Dell Storage Scv3000 And SCv3020 System Deployment Guide User Manual En Us

User Manual: Dell storage-scv3000 - Dell SCv3000 and SCv3020 Storage System Deployment Guide

Open the PDF directly: View PDF ![]() .

.

Page Count: 100

- Dell SCv3000 and SCv3020 Storage System Deployment Guide

- About This Guide

- About the SCv3000 and SCv3020 Storage System

- Install the Storage Center Hardware

- Connect the Front-End Cabling

- Types of Redundancy for Front-End Connections

- Connecting to Host Servers with Fibre Channel HBAs

- Connecting to Host Servers with iSCSI HBAs or Network Adapters

- Connecting to Host Servers with SAS HBAs

- Attach Host Servers (Fibre Channel)

- Attach the Host Servers (iSCSI)

- Attach the Host Servers (SAS)

- Connect the Management Ports to the Management Network

- Connect the Back-End Cabling

- Discover and Configure the Storage Center

- Connect Power Cables and Turn On the Storage System

- Locate Your Service Tag

- Record System Information

- Supported Operating Systems for Storage Center Automated Setup

- Install and Use the Dell Storage Manager

- Discover and Select an Uninitialized Storage Center

- Deploy the Storage Center Using the Direct Connect Method

- Customer Installation Authorization

- Set System Information

- Set Administrator Information

- Confirm the Storage Center Configuration

- Initialize the Storage Center

- Configure Key Management Server Settings

- Create a Storage Type

- Configure Ports

- Configure Time Settings

- Configure SMTP Server Settings

- Using Dell SupportAssist

- Update the Storage Center

- Complete the Configuration and Continue With Setup

- Perform Post-Setup Tasks

- Adding or Removing Expansion Enclosures

- Adding Expansion Enclosures to a Storage System Deployed Without Expansion Enclosures

- Install New SCv300 and SCv320 Expansion Enclosures in a Rack

- Add the SCv300 and SCv320 Expansion Enclosures to the A-Side of the Chain

- Add the SCv300 and SCv320 Expansion Enclosures to the B-Side of the Chain

- Install New SCv360 Expansion Enclosures in a Rack

- Add the SCv360 Expansion Enclosures to the A-Side of the Chain

- Add an SCv360 Expansion Enclosure to the B-Side of the Chain

- Adding a Single Expansion Enclosure to a Chain Currently in Service

- Removing an Expansion Enclosure from a Chain Currently in Service

- Release the Drives in the Expansion Enclosure

- Disconnect the SCv300 and SCv320 Expansion Enclosure from the A-Side of the Chain

- Disconnect the SCv300 and SCv320 Expansion Enclosure from the B-Side of the Chain

- Disconnect the SCv360 Expansion Enclosure from the A-Side of the Chain

- Disconnect the SCv360 Expansion Enclosure from the B-Side of the Chain

- Adding Expansion Enclosures to a Storage System Deployed Without Expansion Enclosures

- Troubleshooting Storage Center Deployment

- Set Up a Local Host or VMware Host

- Initialize the Storage Center Using the USB Serial Port

- Worksheet to Record System Information

- HBA Server Settings

- iSCSI Settings

Dell SCv3000 and SCv3020 Storage System

Deployment Guide

Notes, Cautions, and Warnings

NOTE: A NOTE indicates important information that helps you make better use of your product.

CAUTION: A CAUTION indicates either potential damage to hardware or loss of data and tells you how to avoid the

problem.

WARNING: A WARNING indicates a potential for property damage, personal injury, or death.

Copyright © 2017 Dell Inc. or its subsidiaries. All rights reserved. Dell, EMC, and other trademarks are trademarks of Dell Inc. or its

subsidiaries. Other trademarks may be trademarks of their respective owners.

2017 - 11

Rev. B

Contents

About This Guide................................................................................................................7

Revision History.................................................................................................................................................................. 7

Audience.............................................................................................................................................................................7

Contacting Dell................................................................................................................................................................... 7

Related Publications............................................................................................................................................................7

1 About the SCv3000 and SCv3020 Storage System......................................................... 9

Storage Center Hardware Components..............................................................................................................................9

SCv3000 and SCv3020 Storage System......................................................................................................................9

Expansion Enclosures................................................................................................................................................... 9

Switches.......................................................................................................................................................................9

Storage Center Communication........................................................................................................................................ 10

Front-End Connectivity...............................................................................................................................................10

Back-End Connectivity............................................................................................................................................... 15

System Administration................................................................................................................................................ 15

Storage Center Replication......................................................................................................................................... 15

SCv3000 and SCv3020 Storage System Hardware.......................................................................................................... 15

SCv3000 and SCv3020 Storage System Front-Panel View........................................................................................ 15

SCv3000 and SCv3020 Storage System Back-Panel View......................................................................................... 17

Expansion Enclosure Overview................................................................................................................................... 21

2 Install the Storage Center Hardware............................................................................. 28

Unpacking Storage Center Equipment............................................................................................................................. 28

Safety Precautions........................................................................................................................................................... 28

Installation Safety Precautions....................................................................................................................................28

Electrical Safety Precautions......................................................................................................................................29

Electrostatic Discharge Precautions........................................................................................................................... 29

General Safety Precautions........................................................................................................................................ 29

Prepare the Installation Environment................................................................................................................................30

Install the Storage System in a Rack................................................................................................................................ 30

3 Connect the Front-End Cabling.....................................................................................32

Types of Redundancy for Front-End Connections............................................................................................................ 32

Port Redundancy........................................................................................................................................................32

Storage Controller Redundancy..................................................................................................................................32

Connecting to Host Servers with Fibre Channel HBAs..................................................................................................... 33

Fibre Channel Zoning..................................................................................................................................................33

Cable the Storage System with 2-Port Fibre Channel IO Cards..................................................................................34

Cable the Storage System with 4-Port Fibre Channel IO Cards..................................................................................35

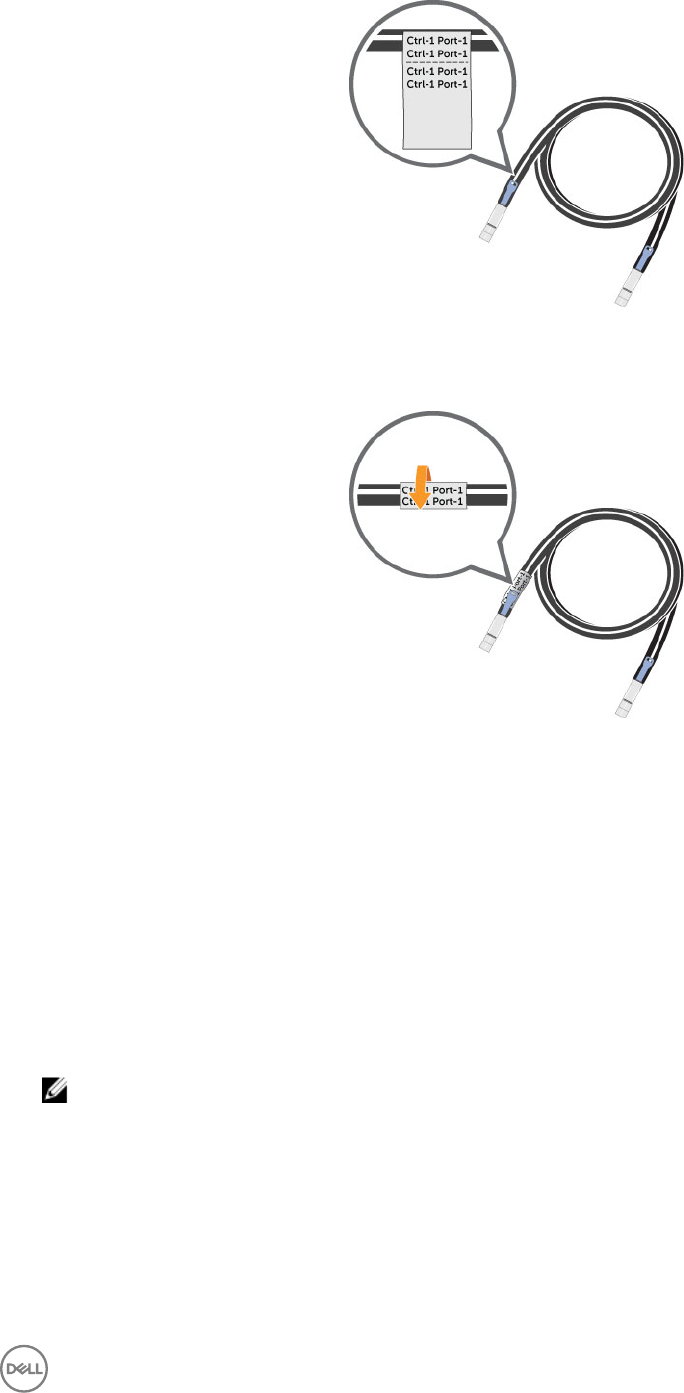

Labeling the Front-End Cables................................................................................................................................... 35

Connecting to Host Servers with iSCSI HBAs or Network Adapters.................................................................................37

Cable the Storage System with 2–Port iSCSI IO Cards.............................................................................................. 37

Cable the Storage System with 4–Port iSCSI IO Cards..............................................................................................38

3

Connect a Storage System to a Host Server Using an iSCSI Mezzanine Card........................................................... 39

Labeling the Front-End Cables................................................................................................................................... 40

Connecting to Host Servers with SAS HBAs.....................................................................................................................41

Cable the Storage System with 4-Port SAS HBAs to Host Servers with One SAS HBA per Server............................41

Labeling the Front-End Cables................................................................................................................................... 42

Attach Host Servers (Fibre Channel)............................................................................................................................... 43

Attach the Host Servers (iSCSI)...................................................................................................................................... 44

Attach the Host Servers (SAS)........................................................................................................................................ 44

Connect the Management Ports to the Management Network....................................................................................... 45

Labeling the Ethernet Management Cables................................................................................................................45

4 Connect the Back-End Cabling......................................................................................47

Expansion Enclosure Cabling Guidelines........................................................................................................................... 47

Back-End SAS Redundancy........................................................................................................................................47

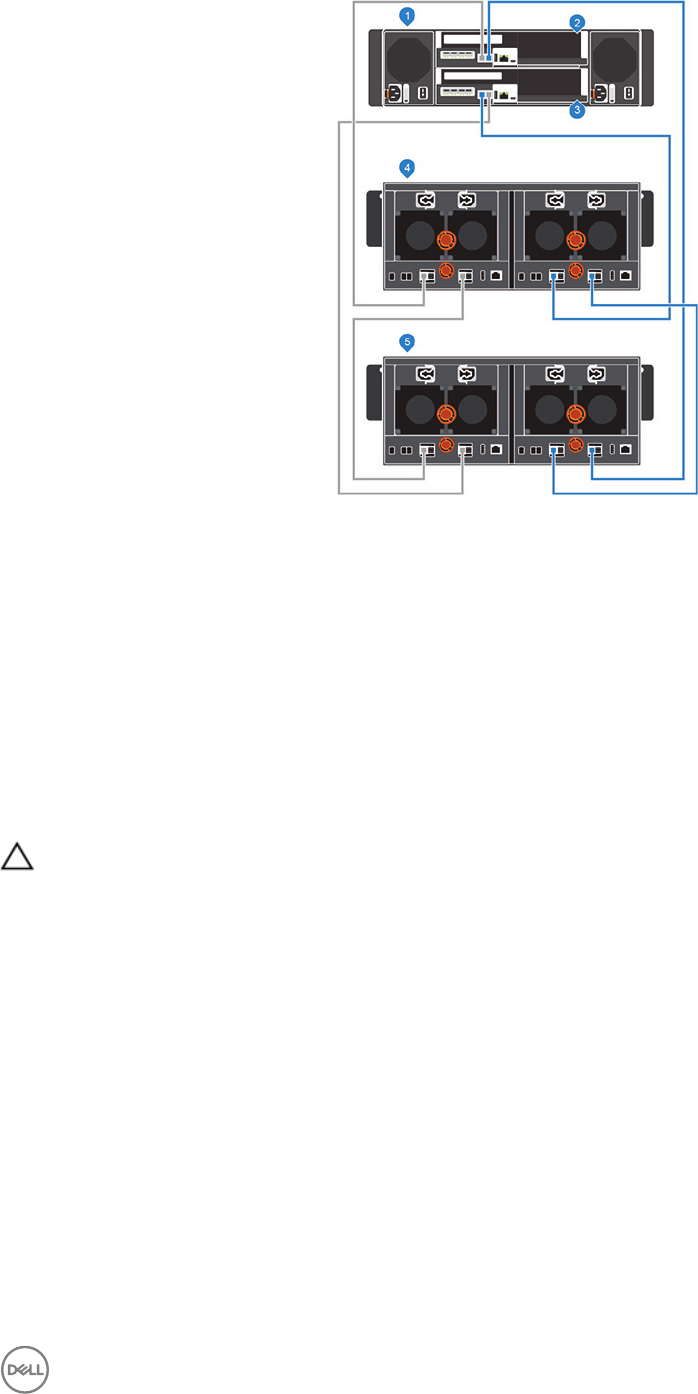

Back-End Connections for an SCv3000 and SCv3020 Storage System With Expansion Enclosures................................47

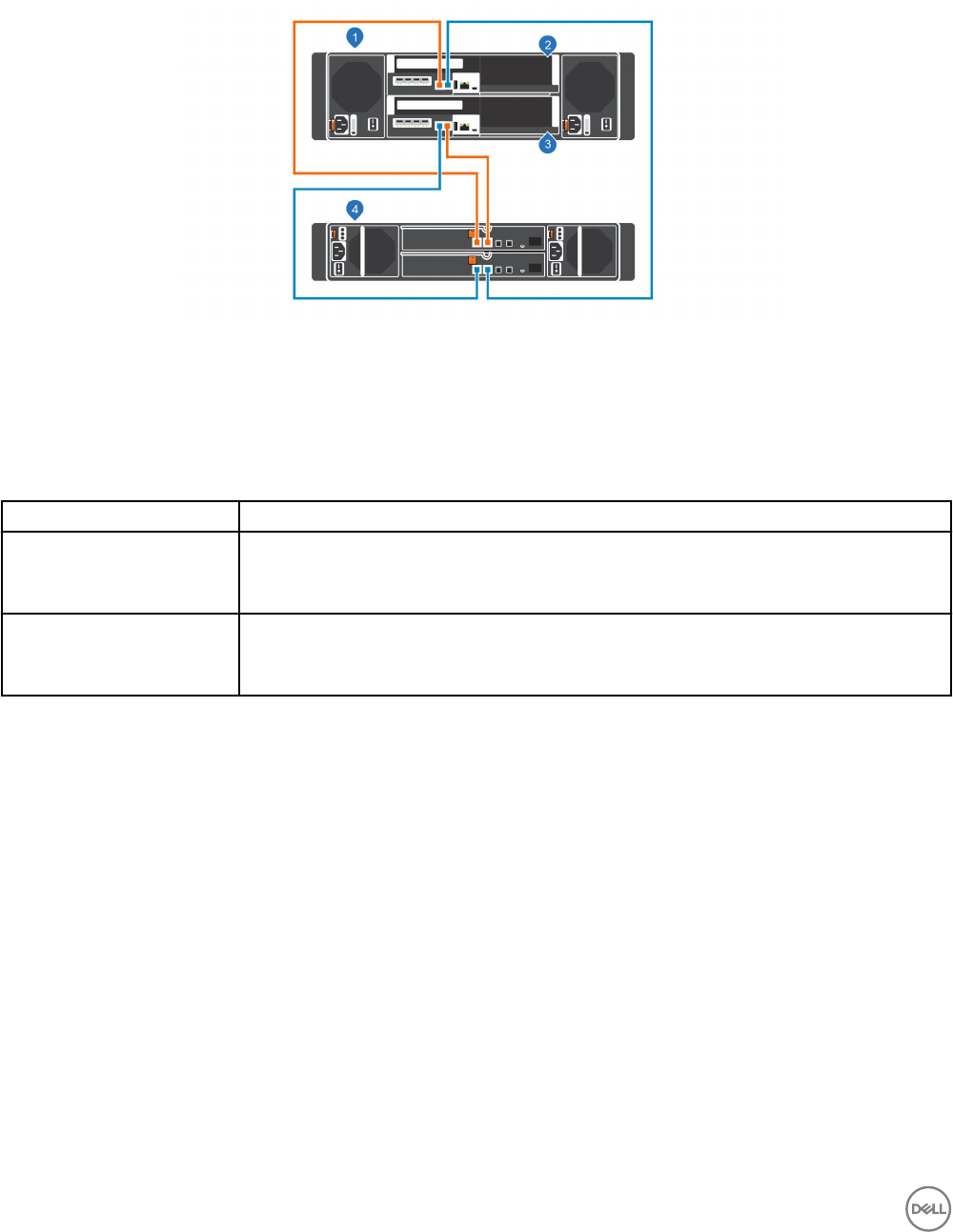

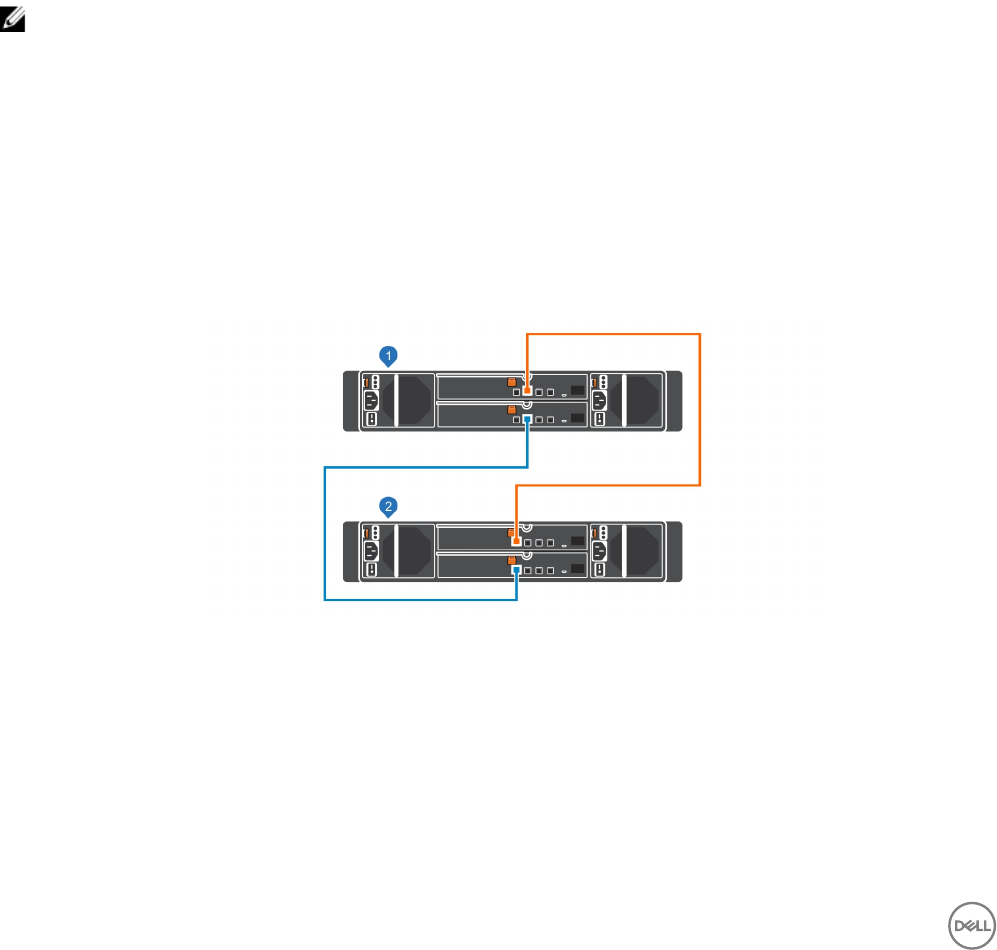

SCv3000 and SCv3020 and One SCv300 and SCv320 Expansion Enclosure............................................................ 48

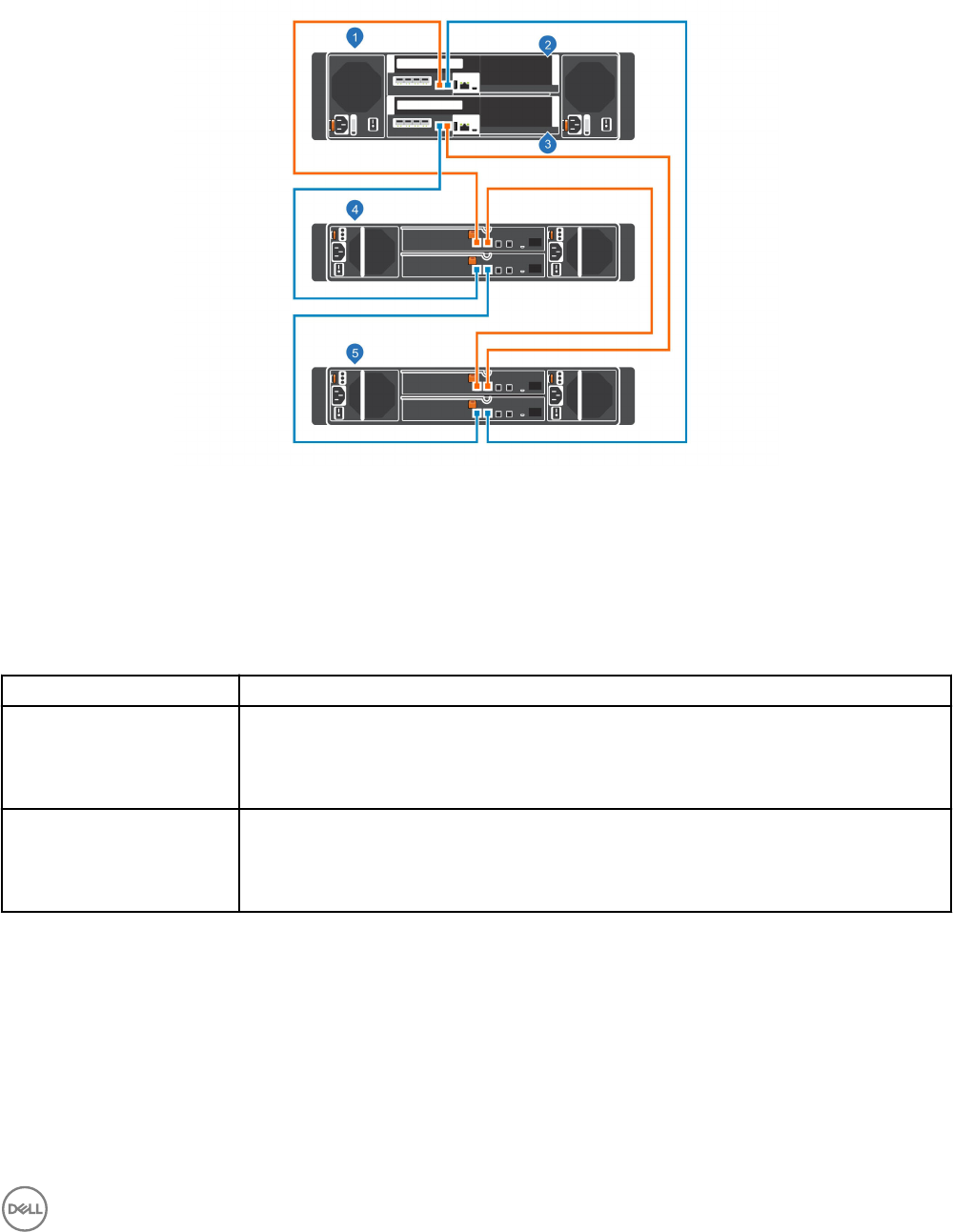

SCv3000 and SCv3020 and Two SCv300 and SCv320 Expansion Enclosures...........................................................49

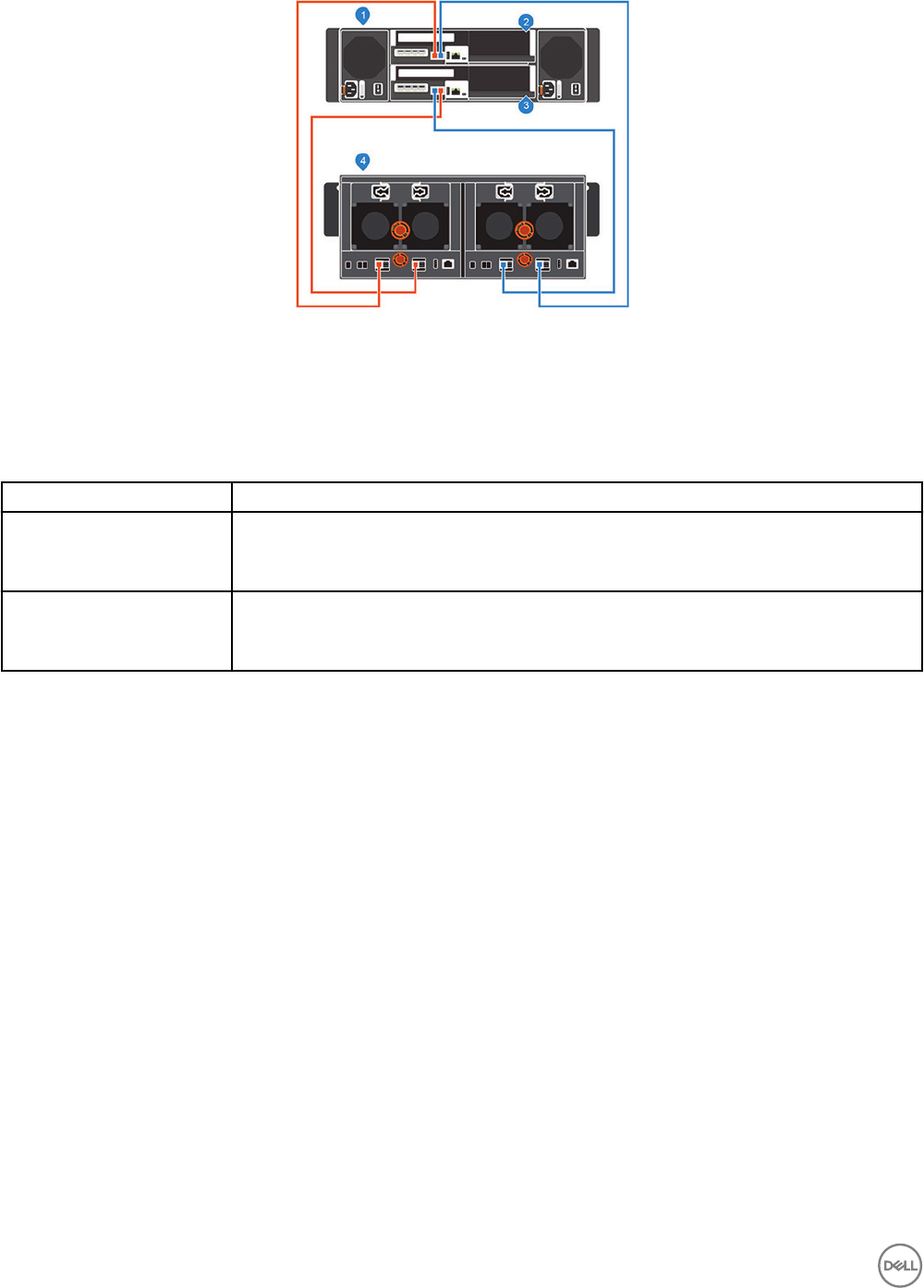

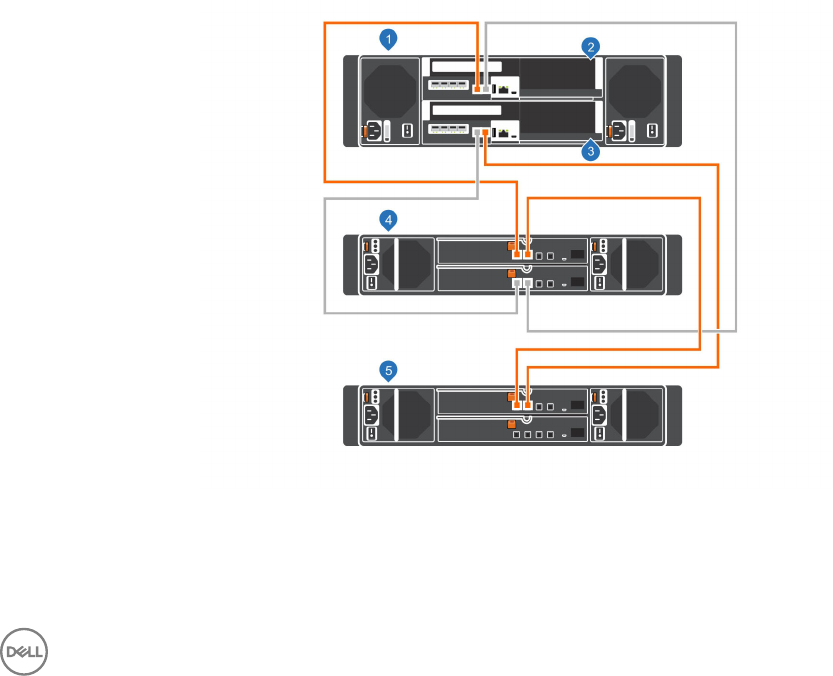

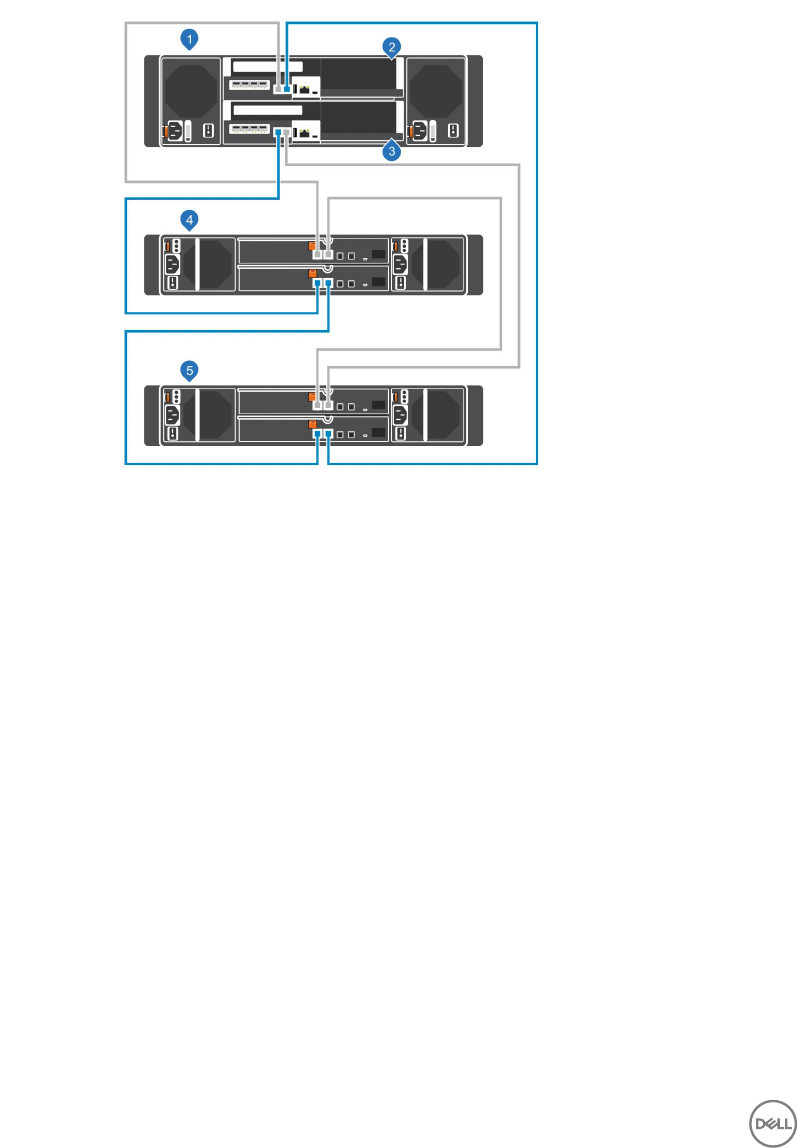

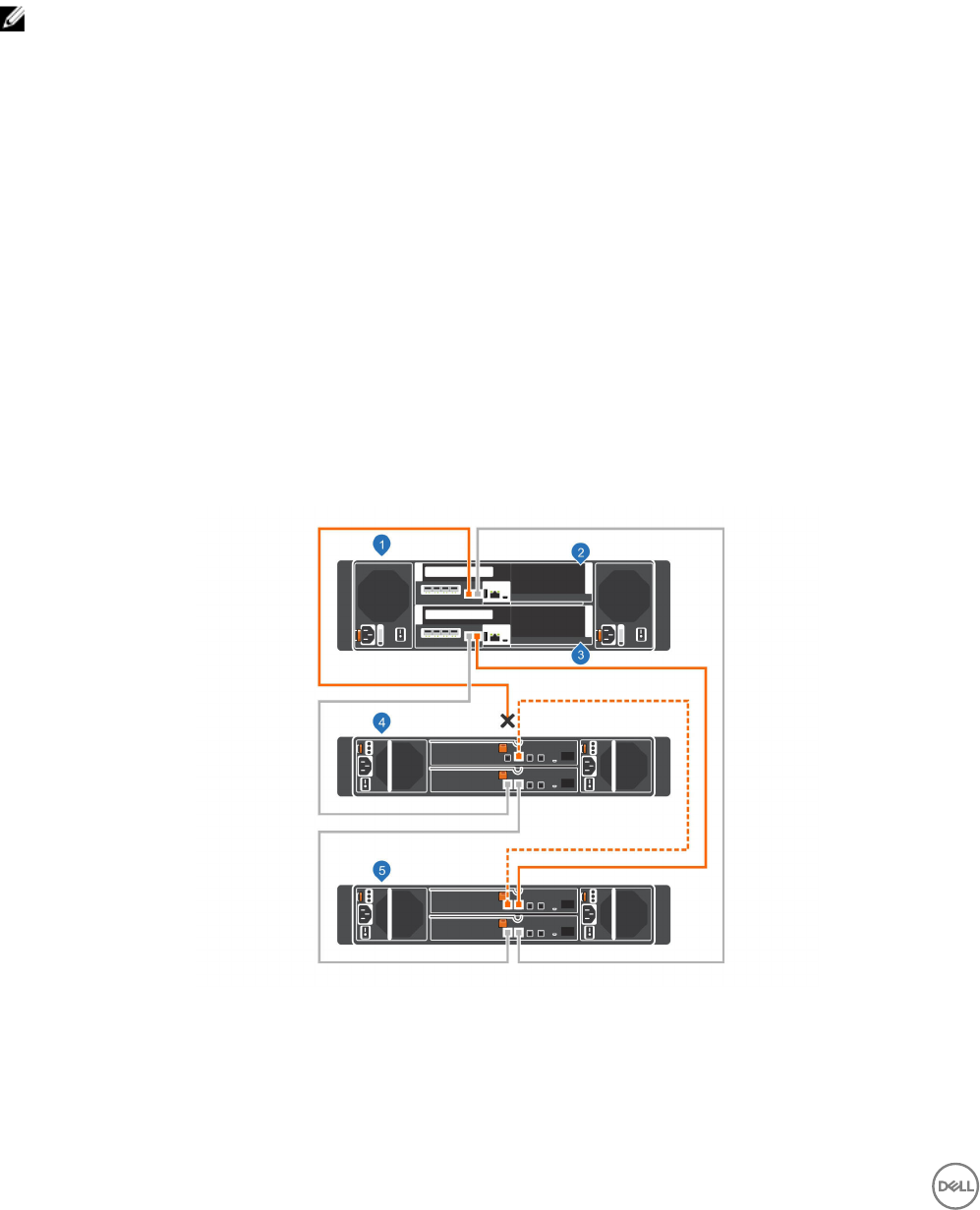

SCv3000 and SCv3020 Storage System and One SCv360 Expansion Enclosure.......................................................49

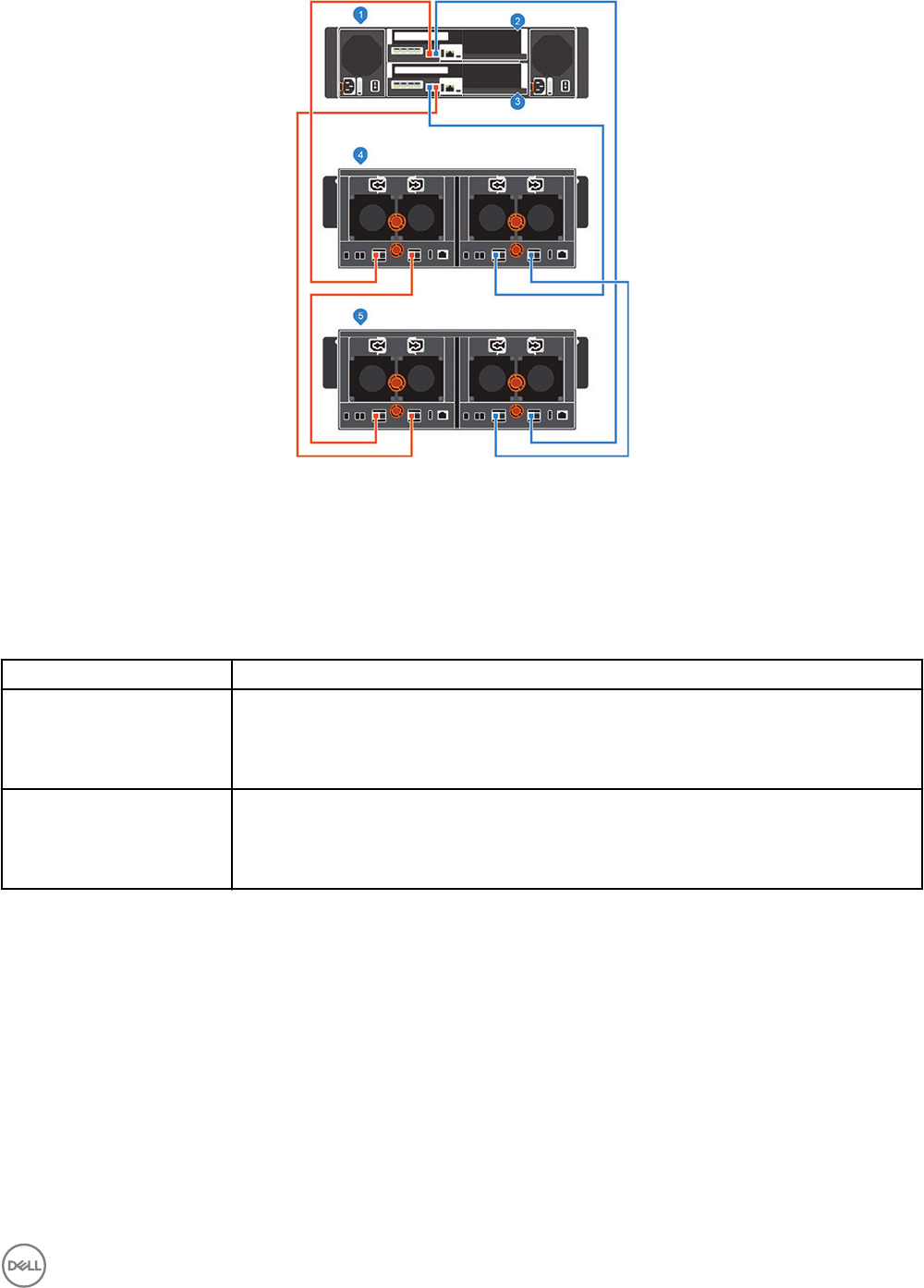

SCv3000 and SCv3020 Storage System and Two SCv360 Expansion Enclosures.....................................................50

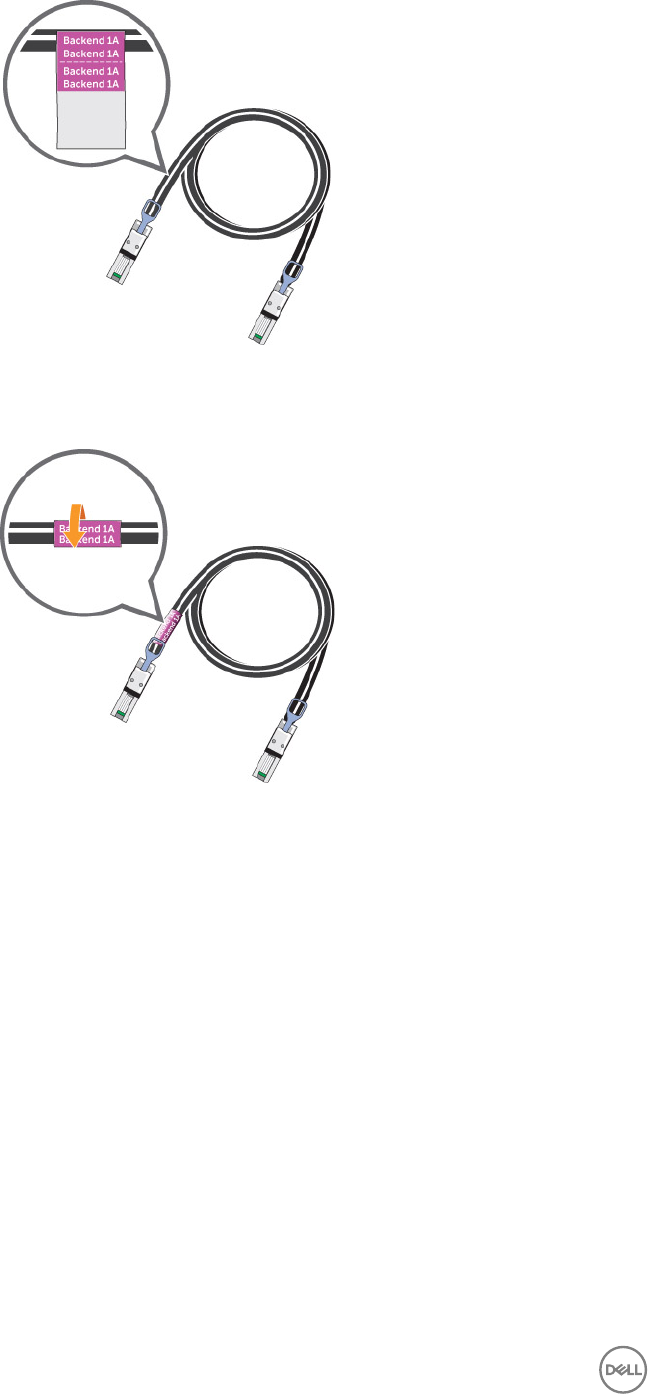

Label the Back-End Cables............................................................................................................................................... 51

5 Discover and Congure the Storage Center.................................................................. 53

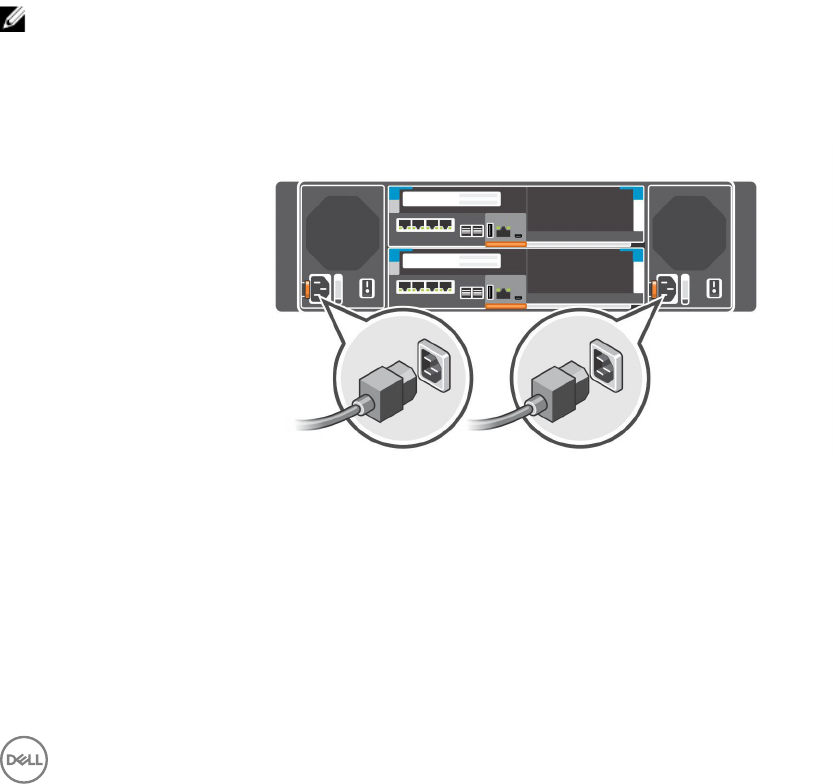

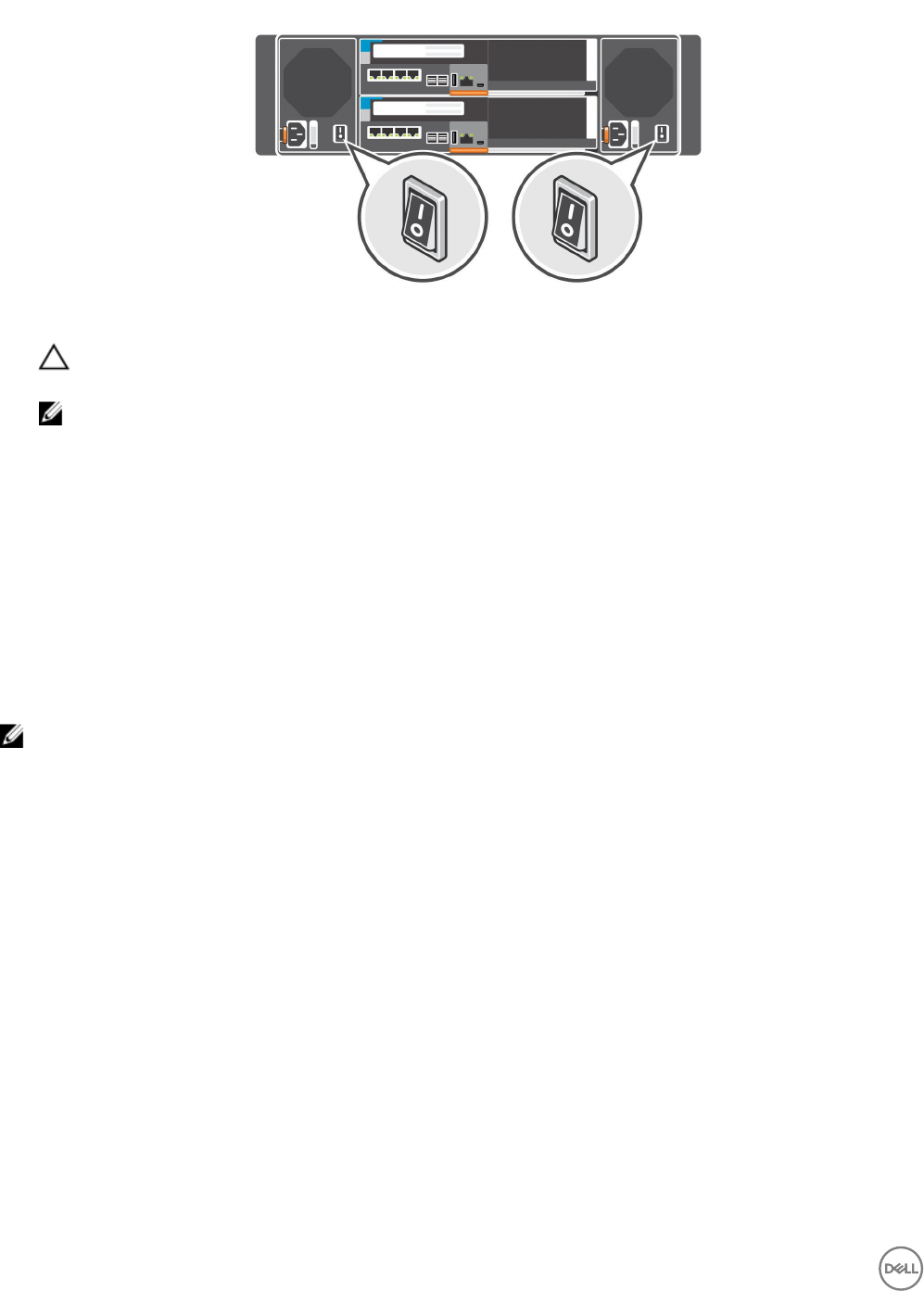

Connect Power Cables and Turn On the Storage System................................................................................................ 53

Locate Your Service Tag................................................................................................................................................... 54

Record System Information..............................................................................................................................................54

Supported Operating Systems for Storage Center Automated Setup ..............................................................................54

Install and Use the Dell Storage Manager......................................................................................................................... 54

Discover and Select an Uninitialized Storage Center........................................................................................................ 55

Deploy the Storage Center Using the Direct Connect Method.........................................................................................55

Customer Installation Authorization..................................................................................................................................56

Set System Information....................................................................................................................................................56

Set Administrator Information.......................................................................................................................................... 56

Conrm the Storage Center Conguration....................................................................................................................... 57

Initialize the Storage Center..............................................................................................................................................57

Congure Key Management Server Settings....................................................................................................................57

Create a Storage Type...................................................................................................................................................... 57

Congure Ports................................................................................................................................................................58

Congure Fibre Channel Ports................................................................................................................................... 58

Congure iSCSI Ports................................................................................................................................................ 58

Congure SAS Ports.................................................................................................................................................. 59

Congure Time Settings...................................................................................................................................................59

Congure SMTP Server Settings..................................................................................................................................... 59

Using Dell SupportAssist.................................................................................................................................................. 59

Enable SupportAssist ................................................................................................................................................ 60

Update the Storage Center...............................................................................................................................................61

Complete the Conguration and Continue With Setup......................................................................................................61

4

Modify iDRAC Interface Settings for a Storage System.............................................................................................. 61

Uncongure Unused I/O Ports................................................................................................................................... 62

6 Perform Post-Setup Tasks............................................................................................ 63

Update Storage Center Using Dell Storage Manager........................................................................................................63

Check the Status of the Update.......................................................................................................................................63

Change the Operation Mode of a Storage Center............................................................................................................ 63

Verify Connectivity and Failover....................................................................................................................................... 64

Create Test Volumes.................................................................................................................................................. 64

Test Basic Connectivity.............................................................................................................................................. 64

Test Storage Controller Failover................................................................................................................................. 64

Test MPIO..................................................................................................................................................................65

Clean Up Test Volumes.............................................................................................................................................. 65

Send Diagnostic Data Using Dell SupportAssist................................................................................................................65

7 Adding or Removing Expansion Enclosures................................................................... 66

Adding Expansion Enclosures to a Storage System Deployed Without Expansion Enclosures.......................................... 66

Install New SCv300 and SCv320 Expansion Enclosures in a Rack..............................................................................66

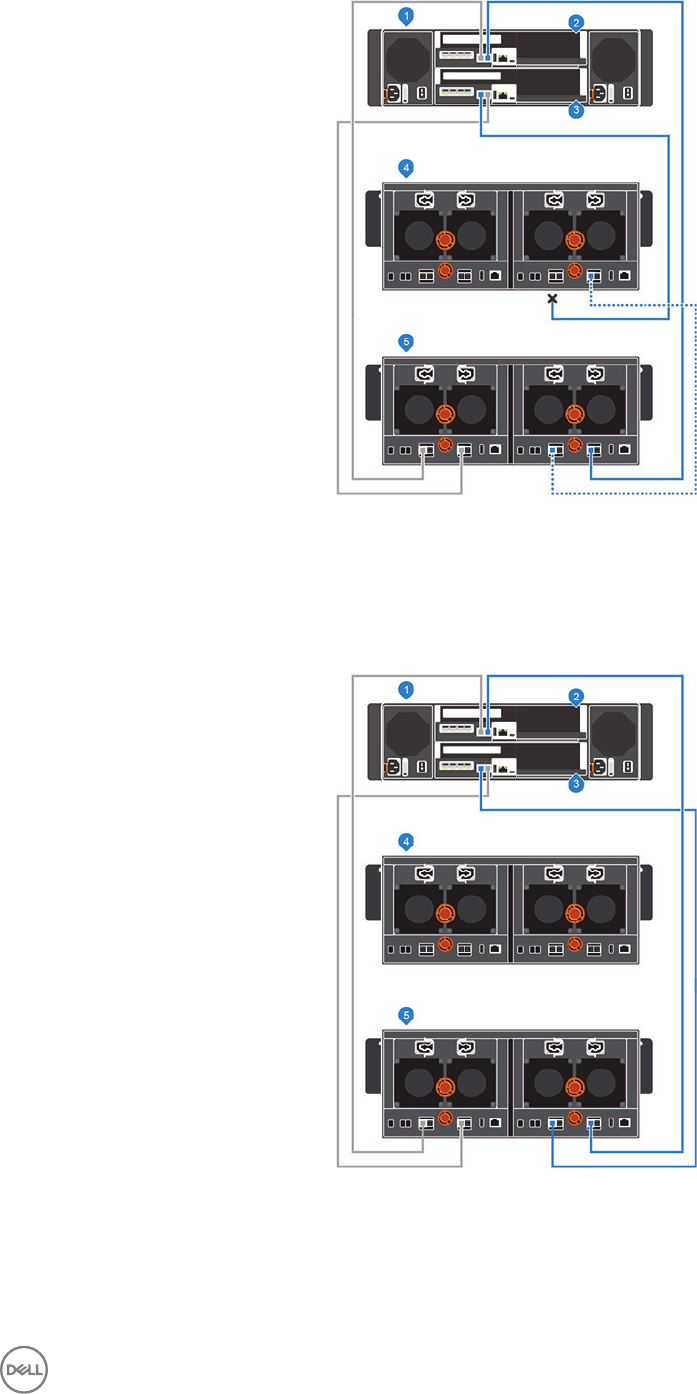

Add the SCv300 and SCv320 Expansion Enclosures to the A-Side of the Chain........................................................67

Add the SCv300 and SCv320 Expansion Enclosures to the B-Side of the Chain....................................................... 68

Install New SCv360 Expansion Enclosures in a Rack..................................................................................................68

Add the SCv360 Expansion Enclosures to the A-Side of the Chain............................................................................69

Add an SCv360 Expansion Enclosure to the B-Side of the Chain...............................................................................70

Adding a Single Expansion Enclosure to a Chain Currently in Service............................................................................... 72

Check the Drive Count............................................................................................................................................... 72

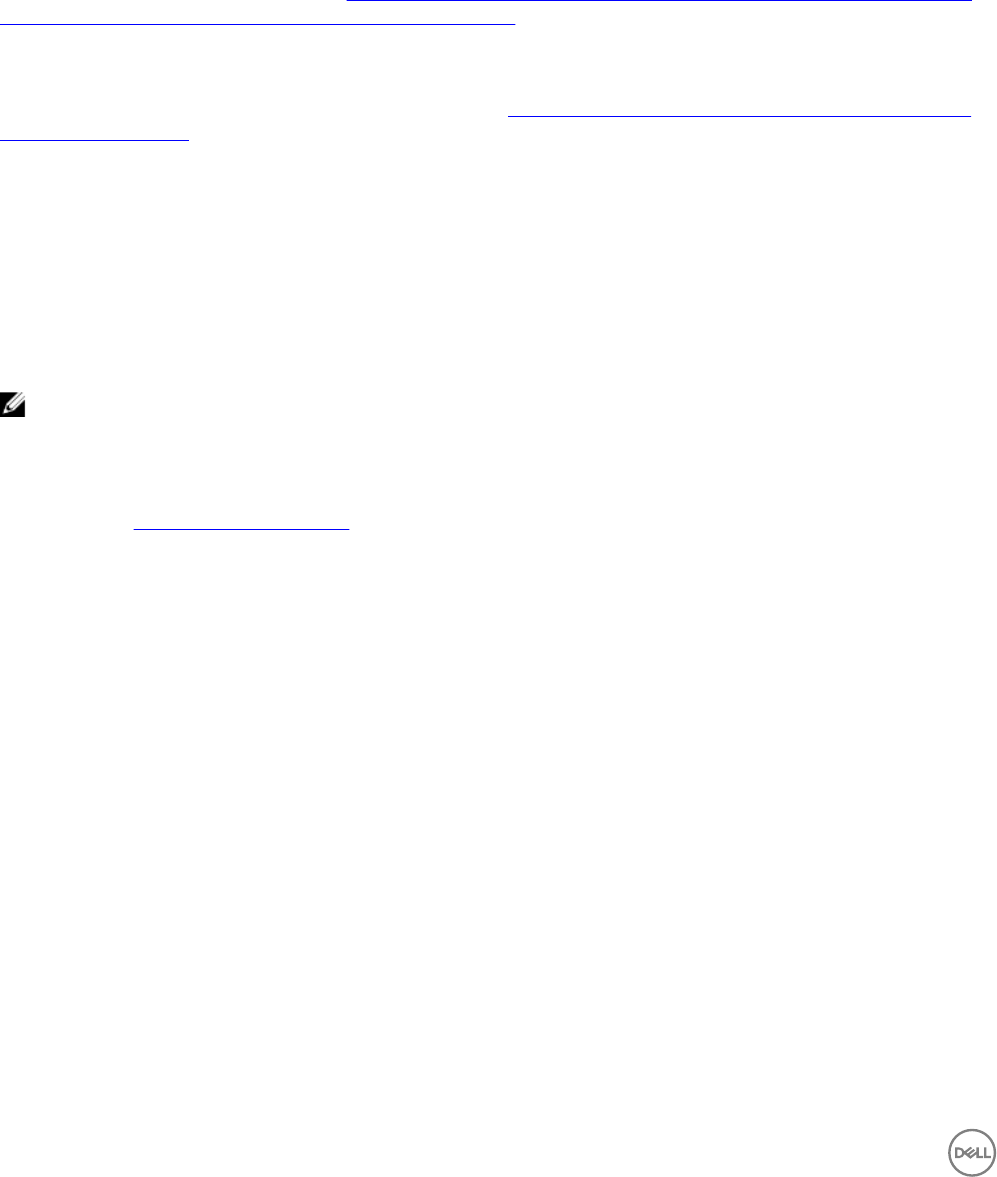

Add an SCv300 and SCv320 Expansion Enclosure to the A-Side of the Chain...........................................................72

Add an SCv300 and SCv320 Expansion Enclosure to the B-Side of the Chain...........................................................73

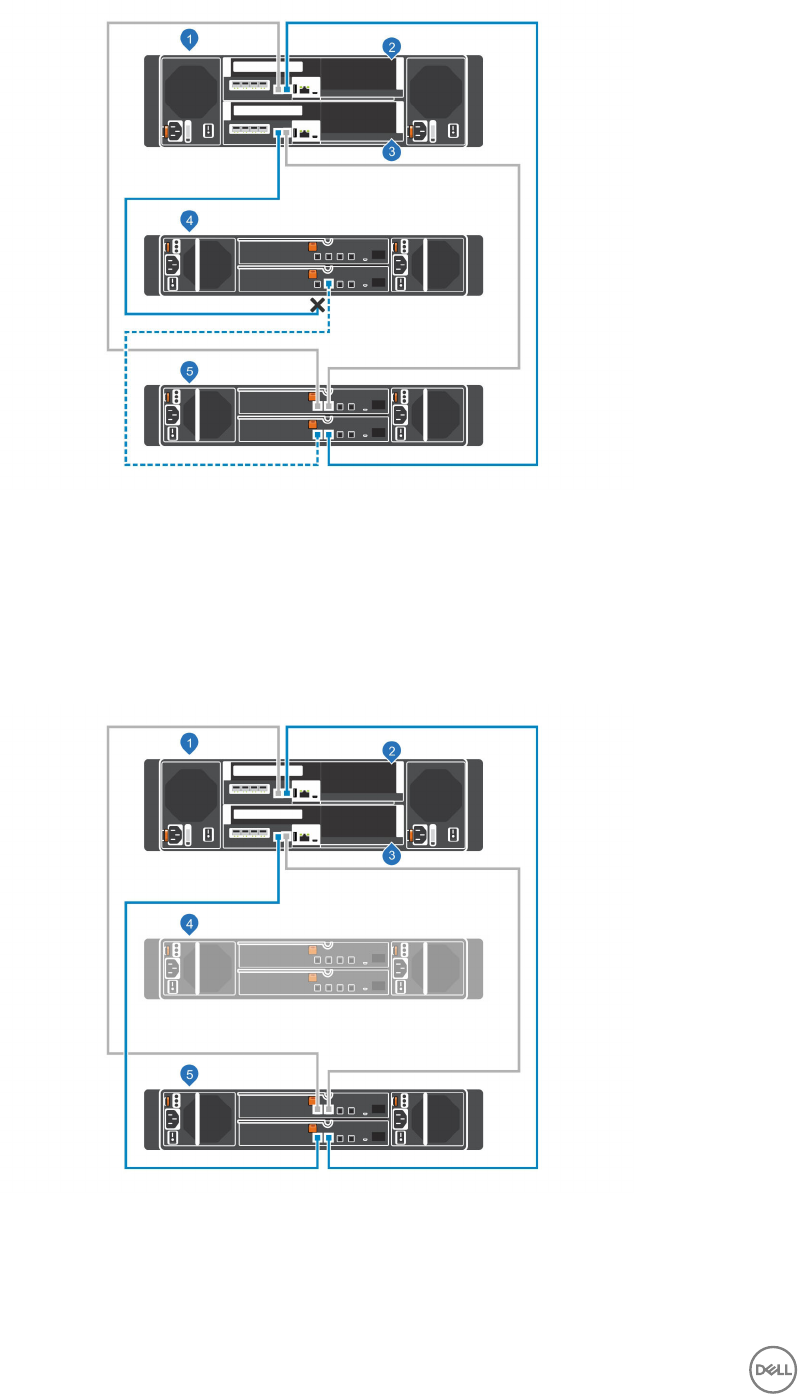

Add an SCv360 Expansion Enclosure to the A-Side of the Chain............................................................................... 75

Add an SCv360 Expansion Enclosure to the B-Side of the Chain............................................................................... 76

Removing an Expansion Enclosure from a Chain Currently in Service...............................................................................77

Release the Drives in the Expansion Enclosure........................................................................................................... 78

Disconnect the SCv300 and SCv320 Expansion Enclosure from the A-Side of the Chain..........................................78

Disconnect the SCv300 and SCv320 Expansion Enclosure from the B-Side of the Chain..........................................79

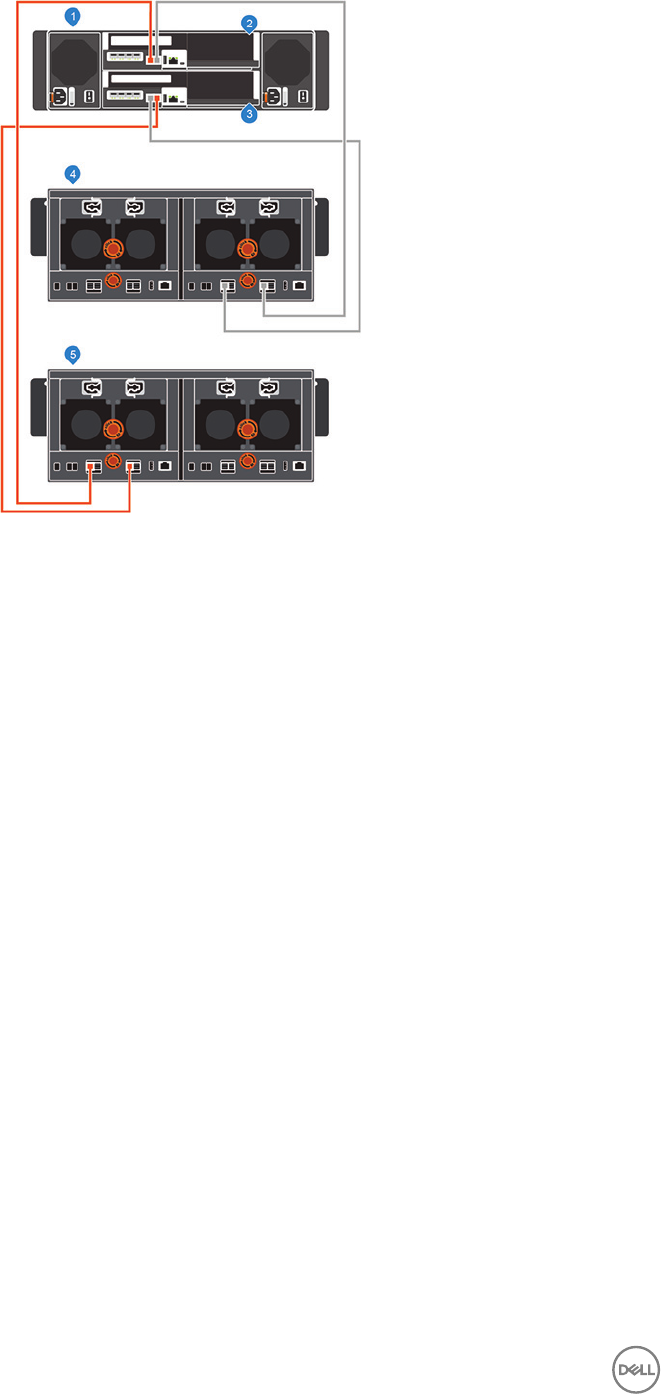

Disconnect the SCv360 Expansion Enclosure from the A-Side of the Chain...............................................................81

Disconnect the SCv360 Expansion Enclosure from the B-Side of the Chain.............................................................. 82

8 Troubleshooting Storage Center Deployment................................................................ 85

Troubleshooting Storage Controllers.................................................................................................................................85

Troubleshooting Hard Drives.............................................................................................................................................85

Troubleshooting Expansion Enclosures............................................................................................................................. 85

Troubleshooting With Lasso............................................................................................................................................. 86

Lasso Application........................................................................................................................................................86

Lasso Documentation.................................................................................................................................................86

Lasso Requirements................................................................................................................................................... 86

A Set Up a Local Host or VMware Host............................................................................ 87

Set Up a VMware ESXi Host from Initial Setup.................................................................................................................87

5

Set Up a Local host from Initial Setup...............................................................................................................................87

Set Up Multiple VMware ESXi Hosts in a VMware vSphere Cluster................................................................................. 88

B Initialize the Storage Center Using the USB Serial Port................................................ 89

Install the USB Serial Port Driver .....................................................................................................................................89

Establish a Terminal Session............................................................................................................................................. 89

Discover the Storage Center Using the Setup Utility Tool................................................................................................ 90

C Worksheet to Record System Information..................................................................... 91

Storage Center Information...............................................................................................................................................91

iSCSI Fault Domain Information.........................................................................................................................................91

Additional Storage Center Information..............................................................................................................................92

Fibre Channel Zoning Information.....................................................................................................................................92

D HBA Server Settings.....................................................................................................94

Settings by HBA Manufacturer.........................................................................................................................................94

Dell 12 Gb SAS HBAs..................................................................................................................................................94

Cisco Fibre Channel HBAs..........................................................................................................................................94

Emulex HBAs..............................................................................................................................................................94

QLogic HBAs..............................................................................................................................................................95

Settings by Server Operating System.............................................................................................................................. 95

Citrix XenServer.........................................................................................................................................................96

Microsoft Windows Server.........................................................................................................................................96

Novell Netware ..........................................................................................................................................................97

Red Hat Enterprise Linux............................................................................................................................................97

E iSCSI Settings.............................................................................................................. 99

Flow Control Settings.......................................................................................................................................................99

Ethernet Flow Control................................................................................................................................................99

Switch Ports and Flow Control...................................................................................................................................99

Flow Control...............................................................................................................................................................99

Jumbo Frames and Flow Control................................................................................................................................99

Other iSCSI Settings.......................................................................................................................................................100

6

About This Guide

This guide describes the features and technical specications of the SCv3000 and SCv3020 storage system.

Revision History

Document Number: 680-136-001

Revision Date Description

A October 2017 Initial release

B November 2017 Corrections to SCv360 cabling

Audience

The information provided in this guide is intended for storage or network administrators and deployment personnel.

Contacting Dell

Dell provides several online and telephone-based support and service options. Availability varies by country and product, and some

services might not be available in your area.

To contact Dell for sales, technical support, or customer service issues, go to www.dell.com/support.

• For customized support, type your system service tag on the support page and click Submit.

• For general support, browse the product list on the support page and select your product.

Related Publications

The following documentation provides additional information about the SCv3000 and SCv3020 storage system.

•Dell SCv3000 and SCv3020 Storage System Getting Started Guide

Provides information about an SCv3000 and SCv3020 storage system, such as installation instructions and technical

specications.

•Dell SCv3000 and SCv3020 Storage System Owner’s Manual

Provides information about an SCv3000 and SCv3020 storage system, such as hardware features, replacing customer-

replaceable components, and technical specications.

•Dell SCv3000 and SCv3020 Storage System Service Guide

Provides information about SCv3000 and SCv3020 storage system hardware, system component replacement, and system

troubleshooting.

•Dell Storage Center Release Notes

Provides information about new features and known and resolved issues for the Storage Center software.

•Dell Storage Center Update Utility Administrator’s Guide

Describes how to use the Storage Center Update Utility to install Storage Center software updates. Updating Storage Center

software using the Storage Center Update Utility is intended for use only by sites that cannot update Storage Center using

standard methods.

•Dell Storage Center Software Update Guide

Describes how to update Storage Center software from an earlier version to the current version.

•Dell Storage Center Command Utility Reference Guide

Provides instructions for using the Storage Center Command Utility. The Command Utility provides a command-line interface

(CLI) to enable management of Storage Center functionality on Windows, Linux, Solaris, and AIX platforms.

About This Guide 7

•Dell Storage Center Command Set for Windows PowerShell

Provides instructions for getting started with Windows PowerShell cmdlets and scripting objects that interact with the Storage

Center using the PowerShell interactive shell, scripts, and PowerShell hosting applications. Help for individual cmdlets is available

online.

•Storage Manager Installation Guide

Contains installation and setup information.

•Storage Manager Administrator’s Guide

Contains in-depth feature conguration and usage information.

•Dell Storage Manager Release Notes

Provides information about Storage Manager releases, including new features and enhancements, open issues, and resolved

issues.

•Dell TechCenter

Provides technical white papers, best practice guides, and frequently asked questions about Dell Storage products. Go to http://

en.community.dell.com/techcenter/storage/.

8About This Guide

1

About the SCv3000 and SCv3020 Storage System

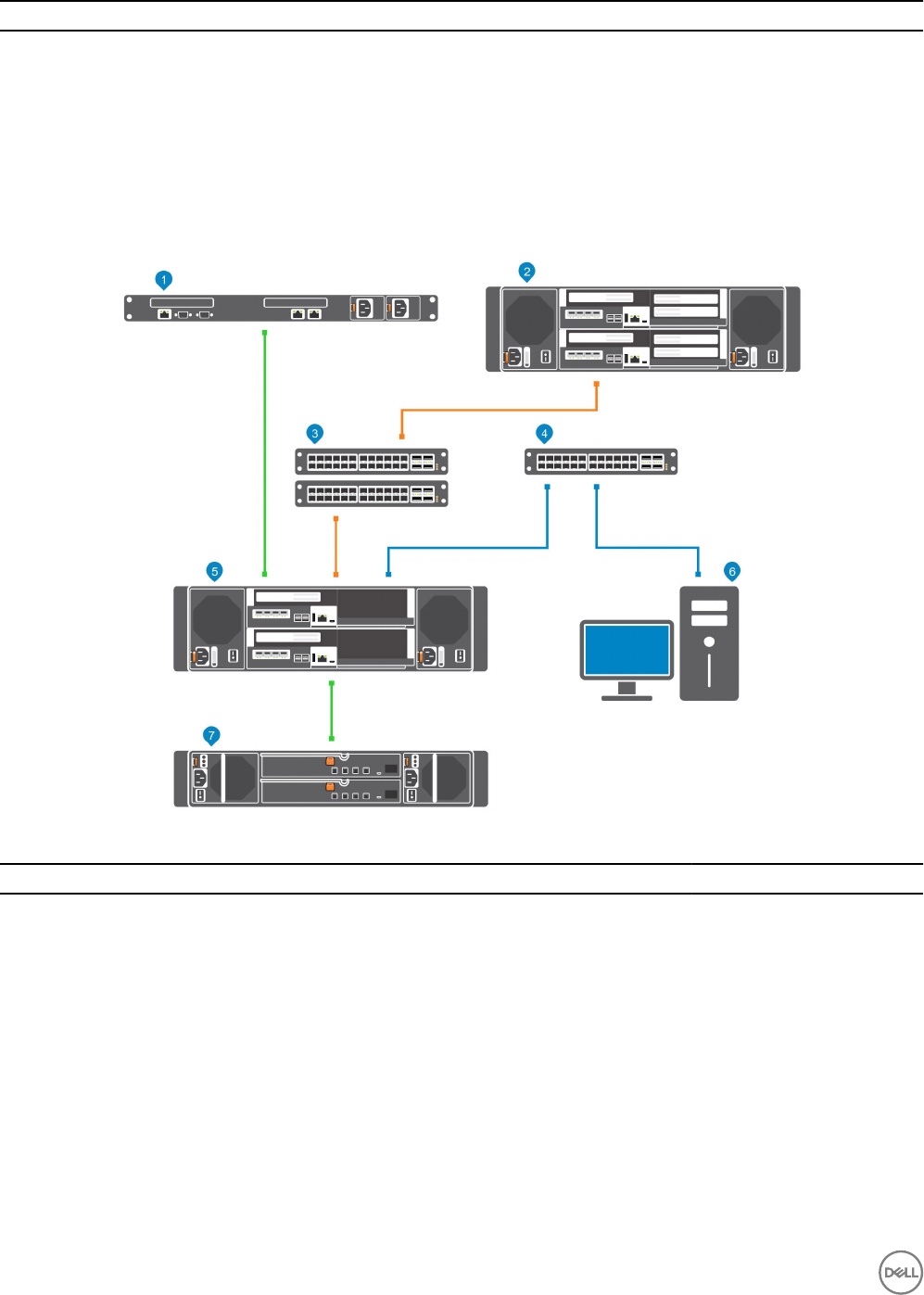

The SCv3000 and SCv3020 storage system provides the central processing capabilities for the Storage Center Operating System

(OS), application software, and management of RAID storage.

The SCv3000 and SCv3020 storage system holds the physical drives that provide storage for the Storage Center. If additional

storage is needed, the SCv3000 and SCv3020 supports SCv300 and SCv320 and SCv360 expansion enclosures.

Storage Center Hardware Components

The Storage Center described in this document consists of an SCv3000 and SCv3020 storage system, expansion enclosures, and

enterprise-class switches.

To allow for storage expansion, the SCv3000 and SCv3020 storage system supports multiple SCv300 and SCv320 and SCv360

expansion enclosures.

NOTE: The cabling between the storage system, switches, and host servers is referred to as front‐end connectivity. The

cabling between the storage system and expansion enclosures is referred to as back-end connectivity.

SCv3000 and SCv3020 Storage System

The SCv3000 and SCv3020 storage systems contain two redundant power supply/cooling fan modules, and two storage controllers

with multiple I/O ports. The I/O ports provide communication with host servers and expansion enclosures. The SCv3000 storage

system contains up to 16 3.5-inch drives and the SCv3020 storage system contains up to 30 2.5-inch drives.

The SCv3000 Series Storage Center supports up to 222 drives per Storage Center system. This total includes the drives in the

storage system chassis and the drives in the expansion enclosures. The SCv3000 and SCv3020 require a minimum of seven hard

disk drives (HDDs) or four solid-state drives (SSDs) installed in the storage system chassis or an expansion enclosure.

Conguration Number of Drives Supported

SCv3000 storage system with SCv300 or SCv320 expansion enclosure 208

SCv3000 storage system with SCv360 expansion enclosure 196

SCv3020 storage system with SCv300 or SCv320 expansion enclosure 222

SCv3020 storage system with SCv360 expansion enclosure 210

Expansion Enclosures

Expansion enclosures allow the data storage capabilities of the SCv3000 and SCv3020 storage system to be expanded beyond the

16 or 30 drives in the storage system chassis.

The SCv3000 and SCv3020 support up to 16 SCv300 expansion enclosures, up to eight SCv320 expansion enclosures, and up to

two SCv360 expansion enclosures.

Switches

Dell oers enterprise-class switches as part of the total Storage Center solution.

The SCv3000 and SCv3020 storage system supports Fibre Channel (FC) and Ethernet switches, which provide robust connectivity

to servers and allow for the use of redundant transport paths. Fibre Channel (FC) or Ethernet switches can provide connectivity to a

About the SCv3000 and SCv3020 Storage System 9

remote Storage Center to allow for replication of data. In addition, Ethernet switches provide connectivity to a management network

to allow conguration, administration, and management of the Storage Center.

Storage Center Communication

A Storage Center uses multiple types of communication for both data transfer and administrative functions.

Storage Center communication is classied into three types: front end, back end, and system administration.

Front-End Connectivity

Front-end connectivity provides I/O paths from servers to a storage system and replication paths from one Storage Center to

another Storage Center. The SCv3000 and SCv3020 storage system provides the following types of front-end connectivity:

• Fibre Channel – Hosts, servers, or network-attached storage (NAS) appliances access storage by connecting to the storage

system Fibre Channel ports through one or more Fibre Channel switches. Connecting host servers directly to the storage

system, without using Fibre Channel switches, is not supported.

• iSCSI – Hosts, servers, or network-attached storage (NAS) appliances access storage by connecting to the storage system

iSCSI ports through one or more Ethernet switches. Connecting host servers directly to the storage system, without using

Ethernet switches, is not supported.

• SAS – Hosts or servers access storage by connecting directly to the storage system SAS ports.

When replication is licensed, the SCv3000 and SCv3020 can use the front-end Fibre Channel or iSCSI ports to replicate data to

another Storage Center.

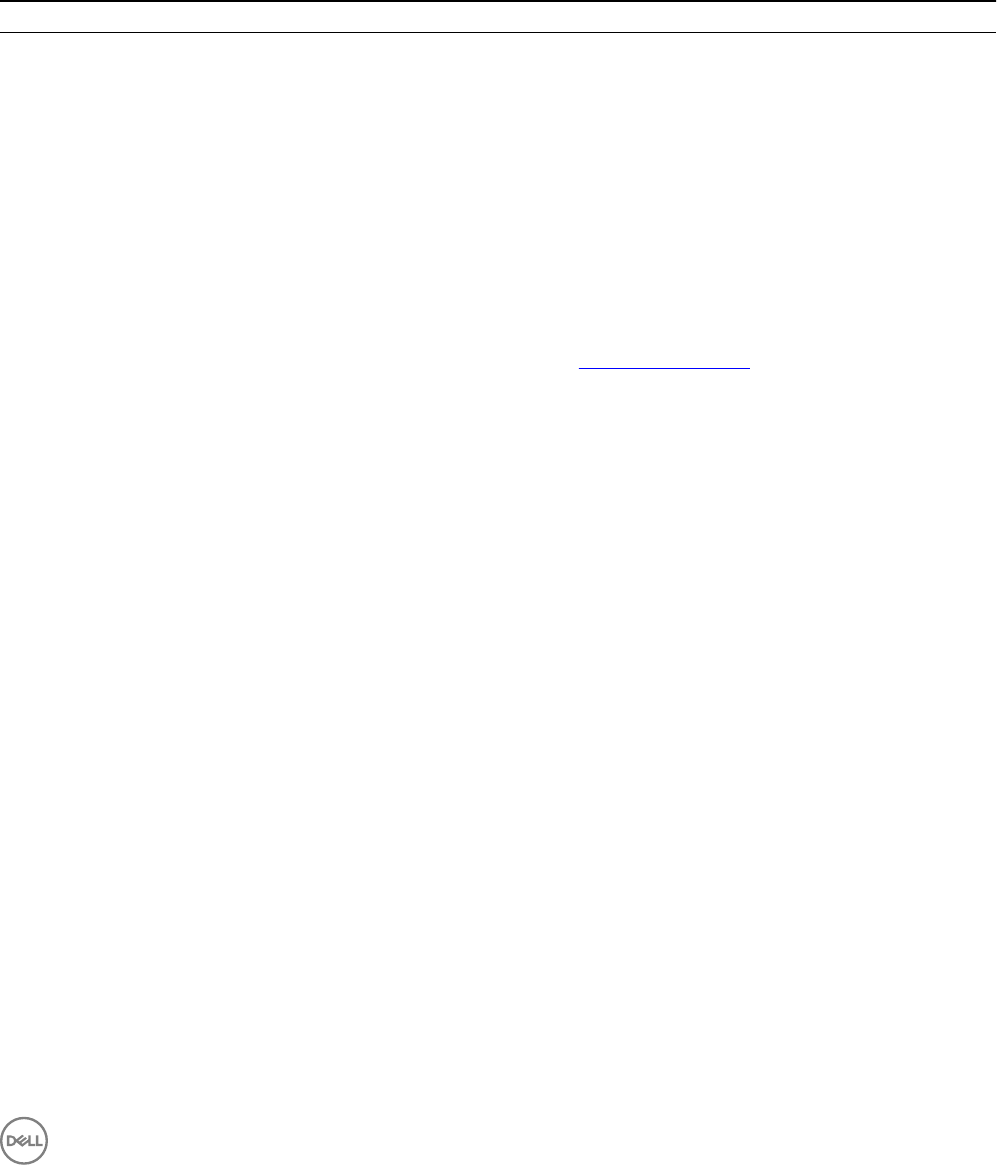

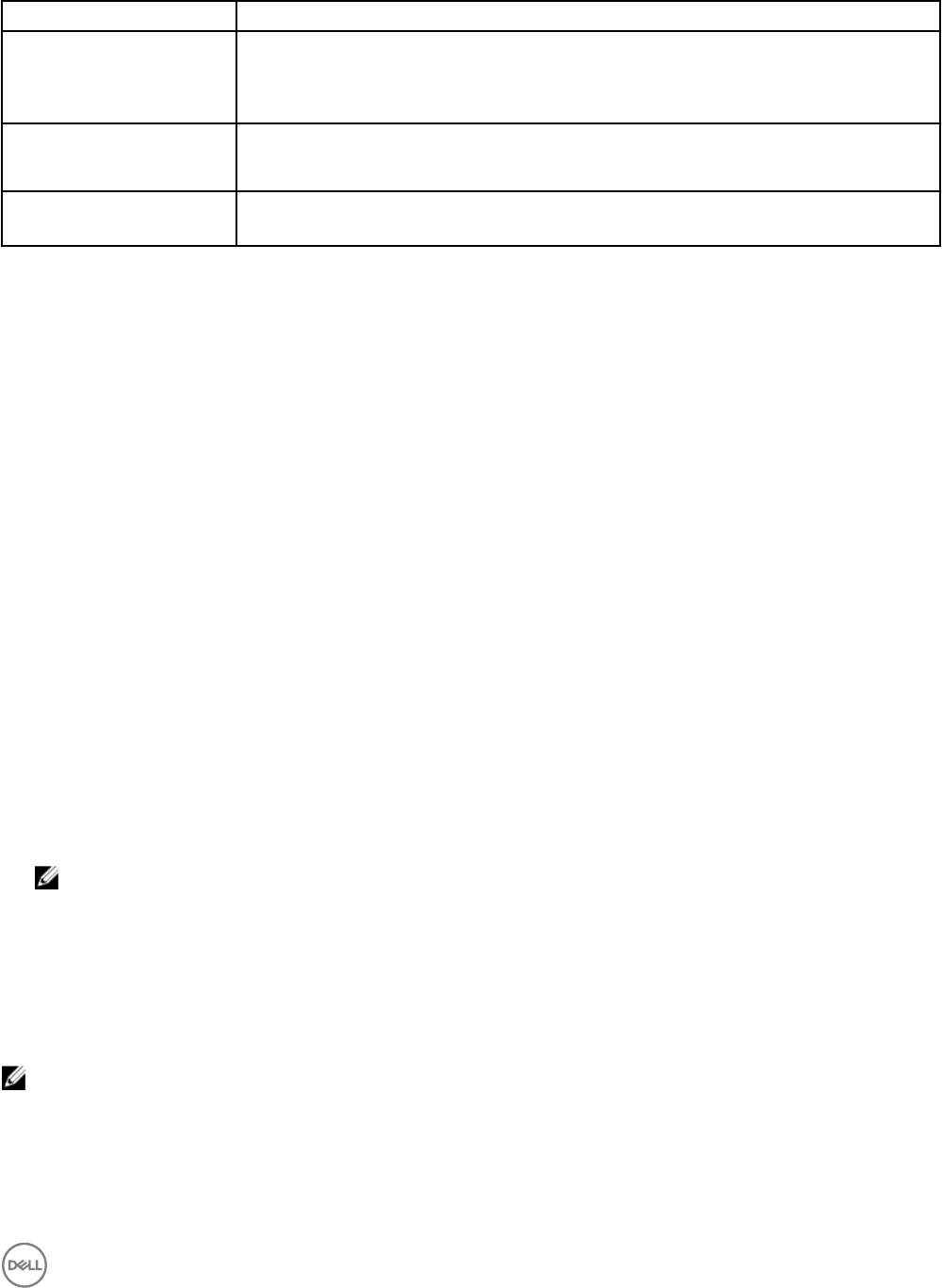

SCv3000 and SCv3020 Storage System With Fibre Channel Front-End Connectivity

A storage system with Fibre Channel front-end connectivity can communicate with the following components of a Storage Center

system.

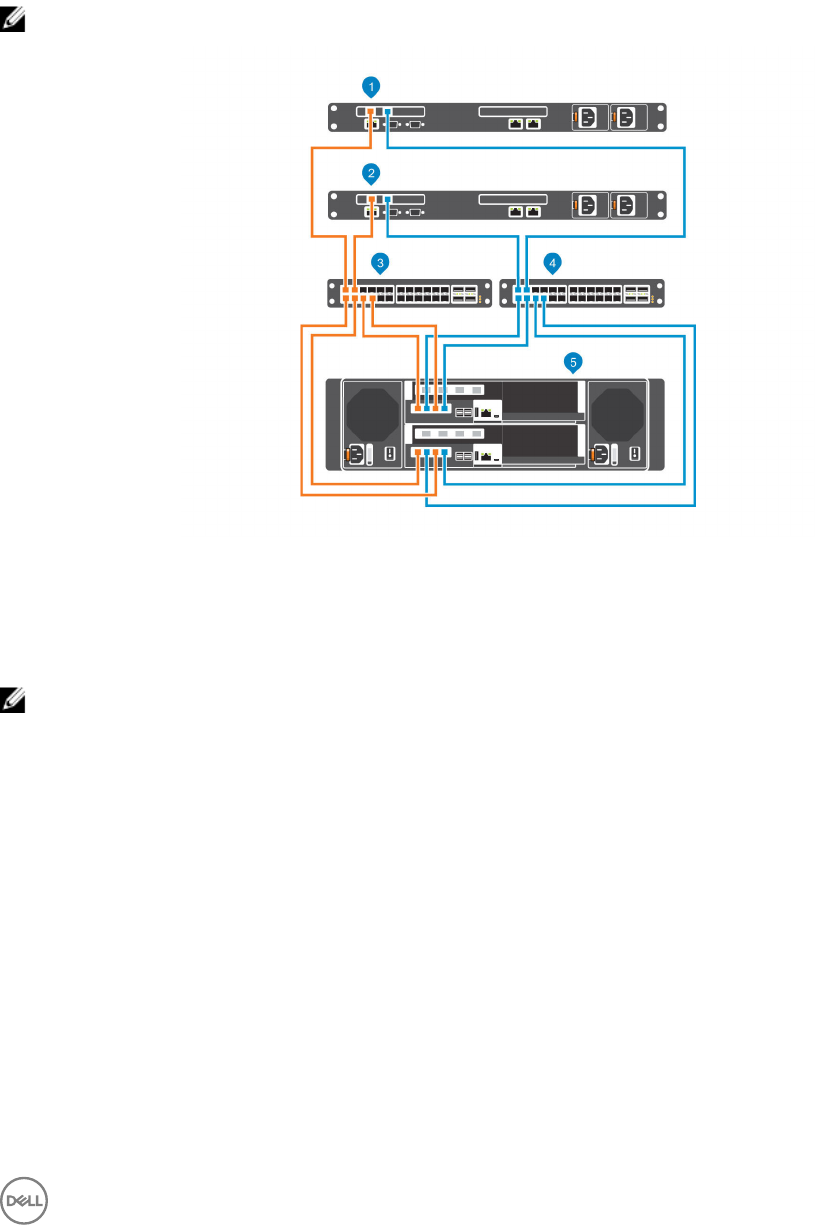

Figure 1. Storage System With Fibre Channel Front-End Connectivity

10 About the SCv3000 and SCv3020 Storage System

Item Description Speed Communication Type

1 Server with Fibre Channel host bus adapters (HBAs) 8 Gbps or 16 Gbps Front End

2 Remote Storage Center connected via Fibre Channel for

replication

8 Gbps or 16 Gbps Front End

3 Fibre Channel switch (A pair of Fibre Channel switches are

recommended for optimal redundancy and connectivity)

8 Gbps or 16 Gbps Front End

4 Ethernet switch for the management network 1 Gbps System Administration

5 SCv3000 and SCv3020 with FC front-end connectivity 8 Gbps or 16 Gbps Front End

6 Storage Manager (Installed on a computer connected to

the storage system through the Ethernet switch)

Up to 1 Gbps System Administration

7 SCv300 and SCv320 expansion enclosures 12 Gbps per channel Back End

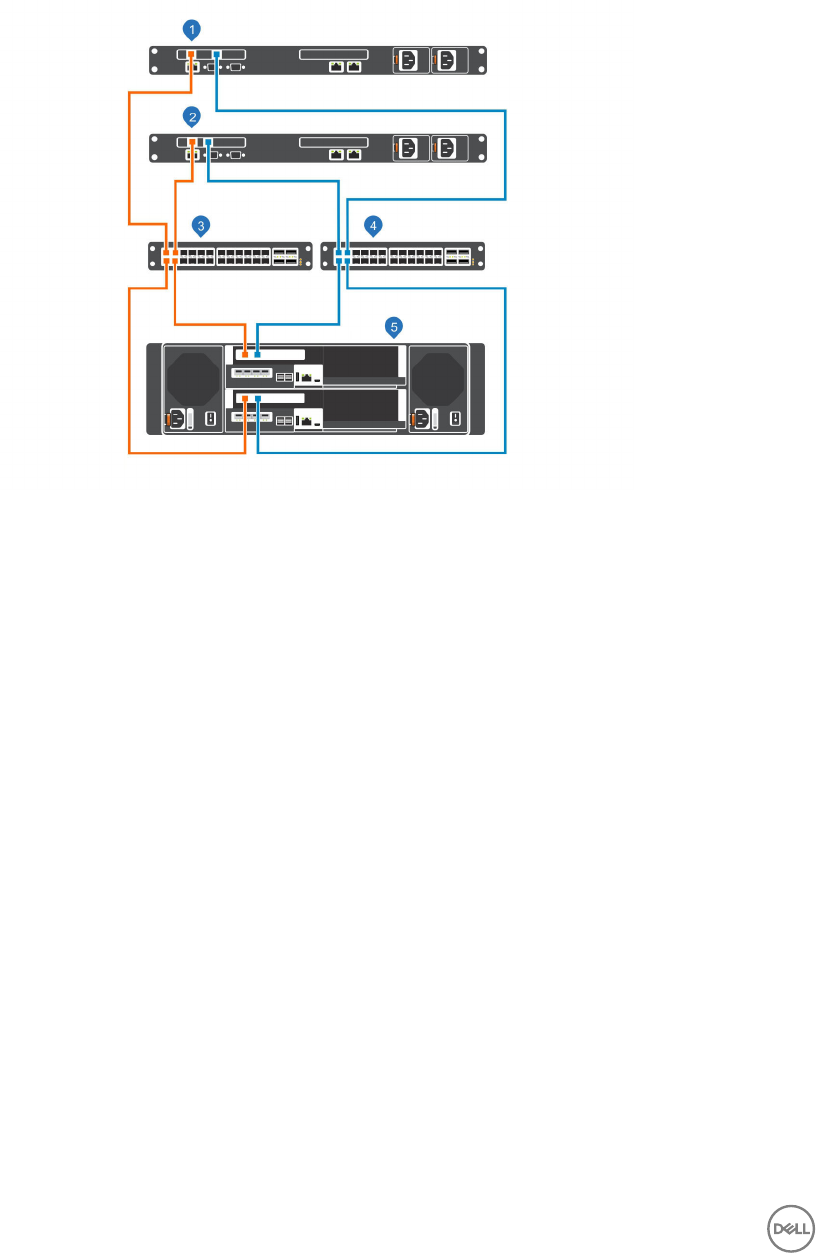

SCv3000 and SCv3020 Storage System With iSCSI Front-End Connectivity

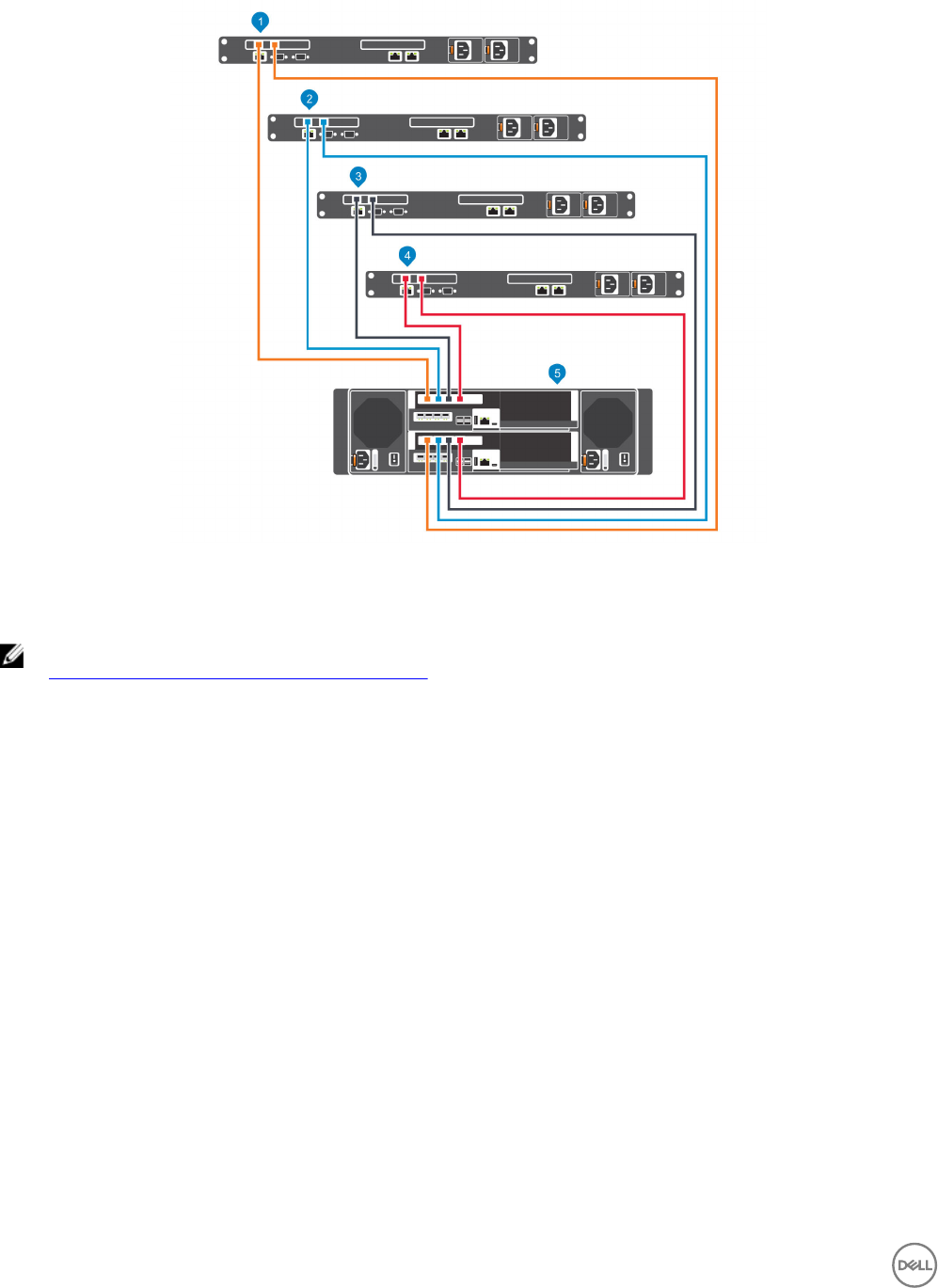

A storage system with iSCSI front-end connectivity can communicate with the following components of a Storage Center system.

Figure 2. Storage System With iSCSI Front-End Connectivity

Item Description Speed Communication Type

1 Server with Ethernet (iSCSI) ports or iSCSI host bus adapters

(HBAs)

1 GbE or 10 GbE Front End

2 Remote Storage Center connected via iSCSI for replication 1 GbE or 10 GbE Front End

3 Ethernet switch (A pair of Ethernet switches is recommended

for optimal redundancy and connectivity)

1 GbE or 10 GbE Front End

4 Ethernet switch for the management network 1 Gbps System Administration

About the SCv3000 and SCv3020 Storage System 11

Item Description Speed Communication Type

5 SCv3000 and SCv3020 with iSCSI front-end connectivity 1 GbE or 10 GbE Front End

6 Storage Manager (Installed on a computer connected to the

storage system through the Ethernet switch)

Up to 1 Gbps System Administration

7 SCv300 and SCv320 expansion enclosures 12 Gbps per channel Back End

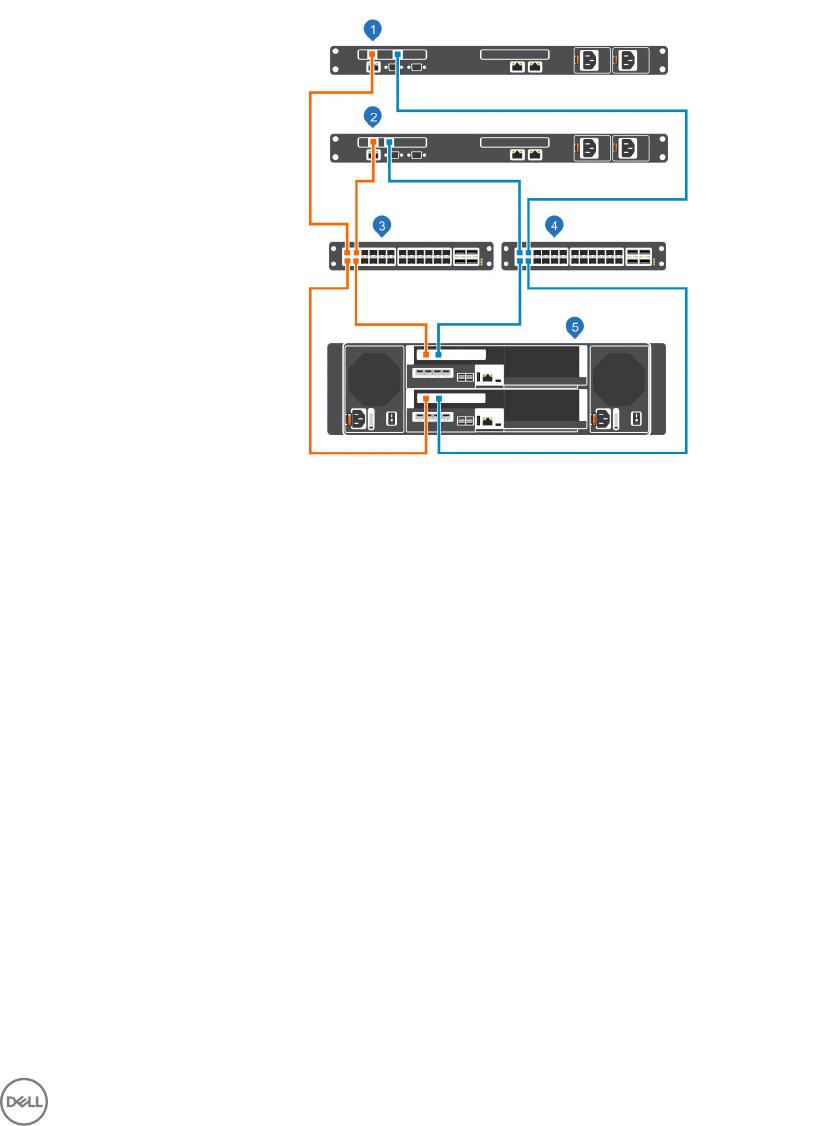

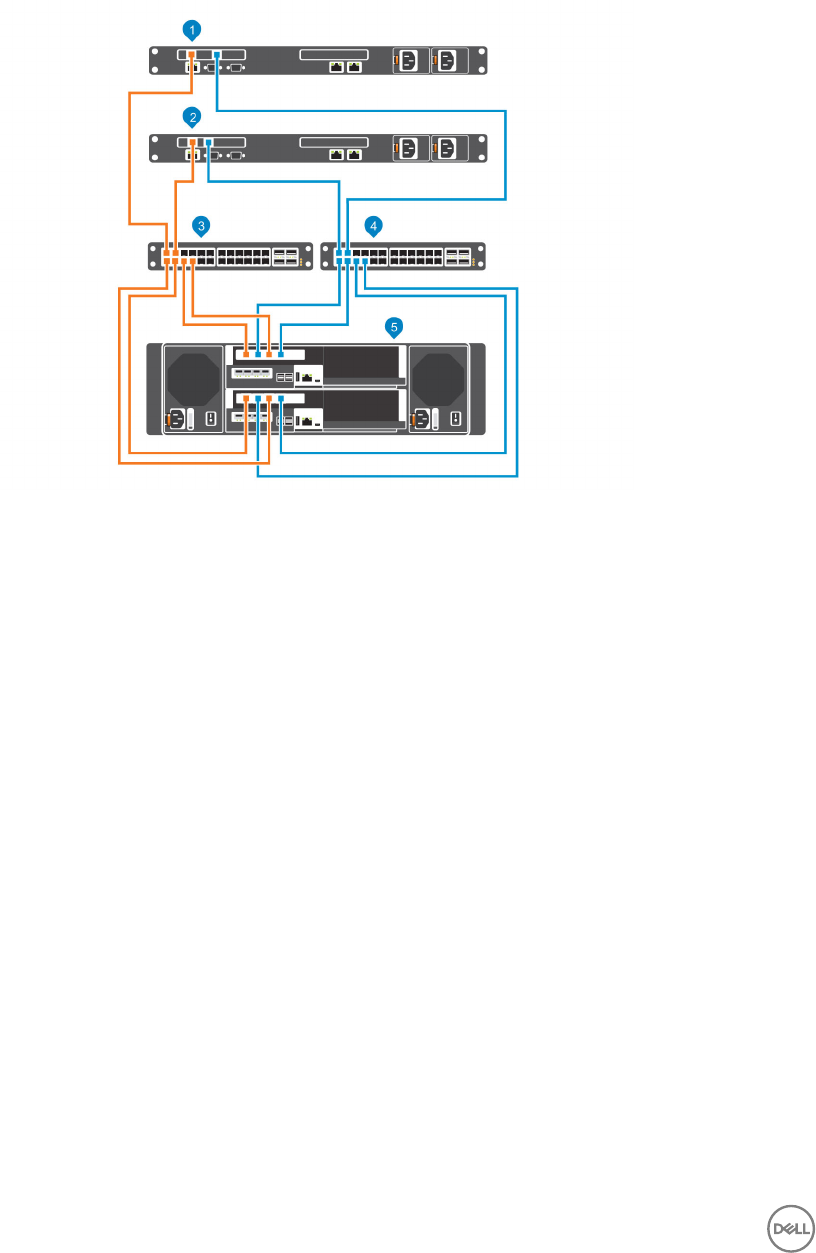

SCv3000 and SCv3020 Storage System With Front-End SAS Connectivity

The SCv3000 and SCv3020 storage system with front-end SAS connectivity can communicate with the following components of a

Storage Center system.

Figure 3. Storage System With Front-End SAS Connectivity

Item Description Speed Communication Type

1 Server with SAS host bus adapters (HBAs) 12 Gbps per channel Front End

2 Remote Storage Center connected via iSCSI for

replication

1 GbE or 10 GbE Front End

3 Ethernet switch (A pair of Ethernet switches is

recommended for optimal redundancy and connectivity)

1 GbE or 10 GbE Front End

4 Ethernet switch for the management network Up to 1 GbE System Administration

5 SCv3000 and SCv3020 with front-end SAS

connectivity

12 Gbps per channel Front End

6 Storage Manager (Installed on a computer connected to

the storage system through the Ethernet switch)

Up to 1 Gbps System Administration

7 SCv300 and SCv320 expansion enclosures 12 Gbps per channel Back End

12 About the SCv3000 and SCv3020 Storage System

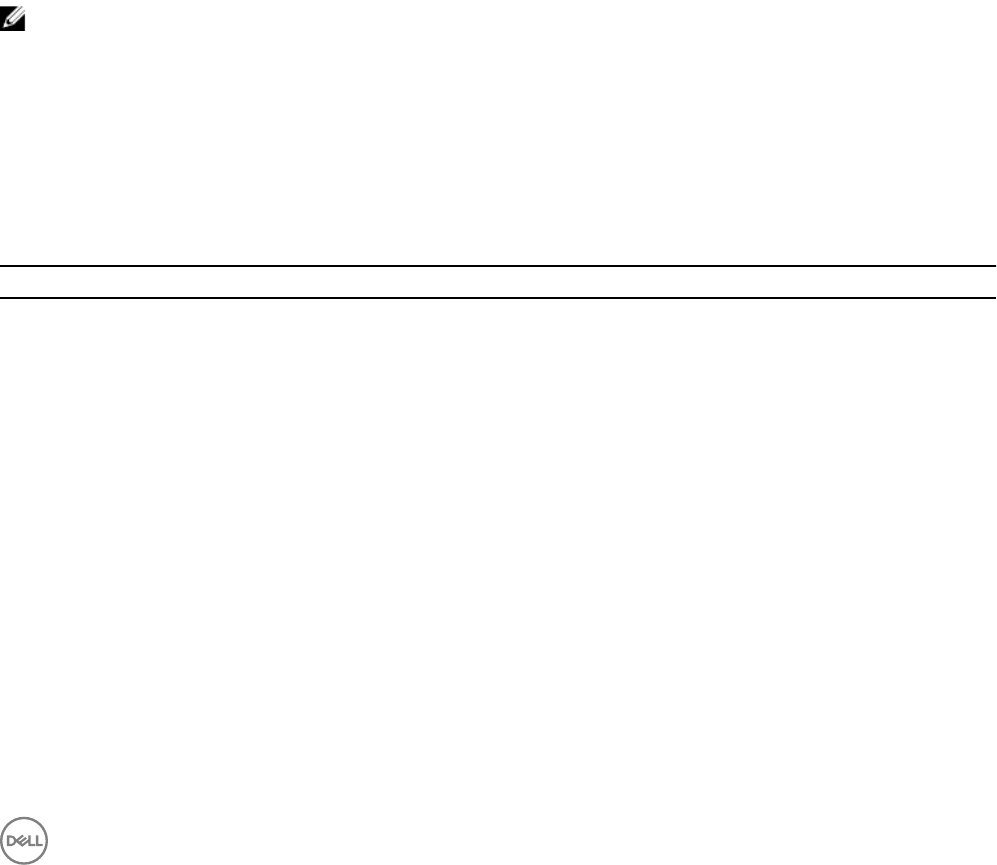

Using SFP+ Transceiver Modules

You can connect to the front-end port of a storage controller using a direct-attached SFP+ cable or an SFP+ transceiver module. An

SCv3000 and SCv3020 storage system with 16 Gb Fibre Channel or 10 GbE iSCSI storage controllers uses short-range small-form-

factor pluggable (SFP+) transceiver modules.

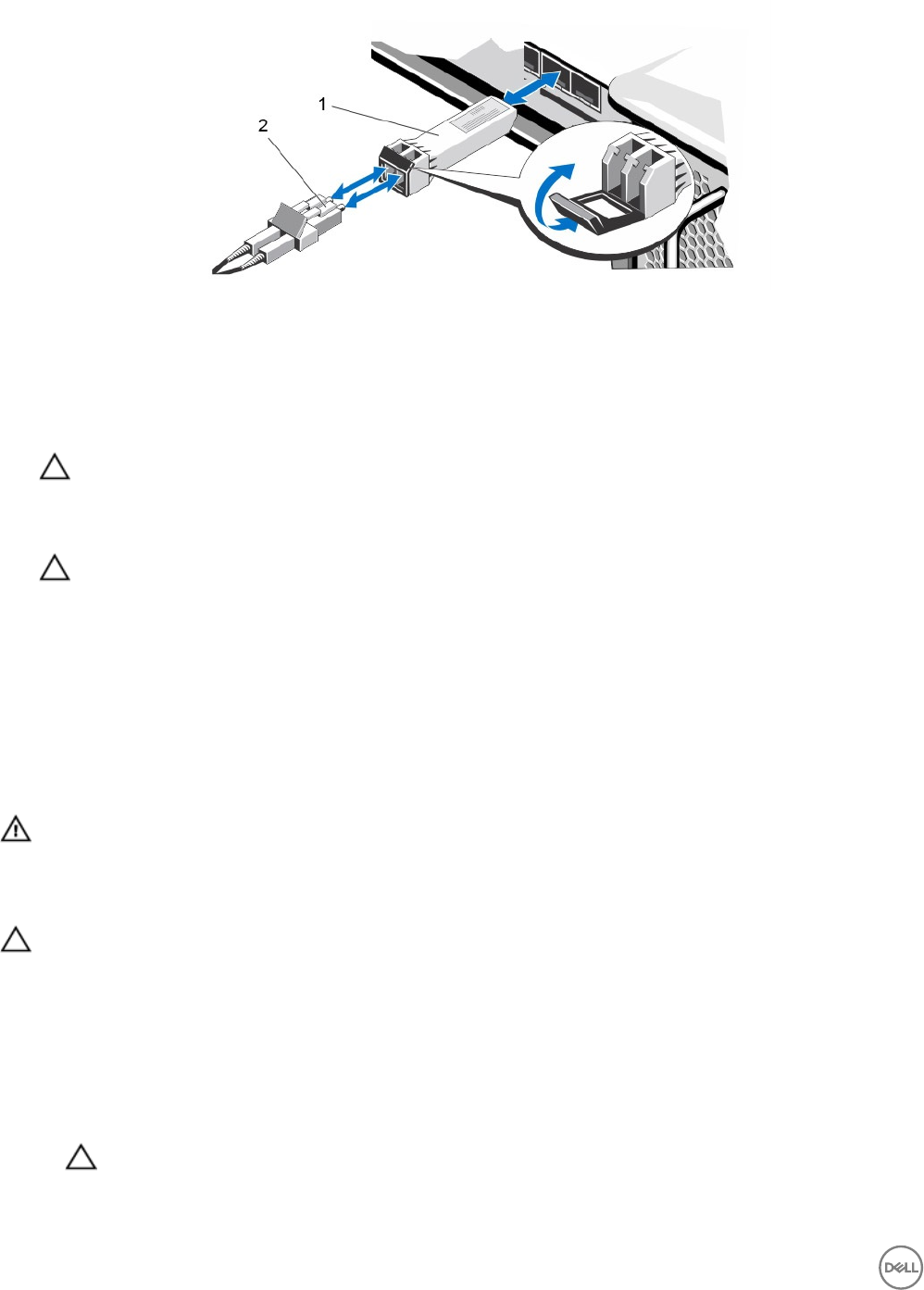

Figure 4. SFP+ Transceiver Module With a Bail Clasp Latch

The SFP+ transceiver modules are installed into the front-end ports of a storage controller.

Guidelines for Using SFP+ Transceiver Modules

Before installing SFP+ transceiver modules and ber-optic cables, read the following guidelines.

CAUTION: When handling static-sensitive devices, take precautions to avoid damaging the product from static

electricity.

• Use only Dell-supported SFP+ transceiver modules with the Storage Center. Other generic SFP+ transceiver modules are not

supported and might not work with the Storage Center.

• The SFP+ transceiver module housing has an integral guide key that is designed to prevent you from inserting the transceiver

module incorrectly.

• Use minimal pressure when inserting an SFP+ transceiver module into a Fibre Channel port. Forcing the SFP+ transceiver

module into a port could damage the transceiver module or the port.

• The SFP+ transceiver module must be installed into a port before you connect the ber-optic cable.

• The ber-optic cable must be removed from the SFP+ transceiver module before you remove the transceiver module from the

port.

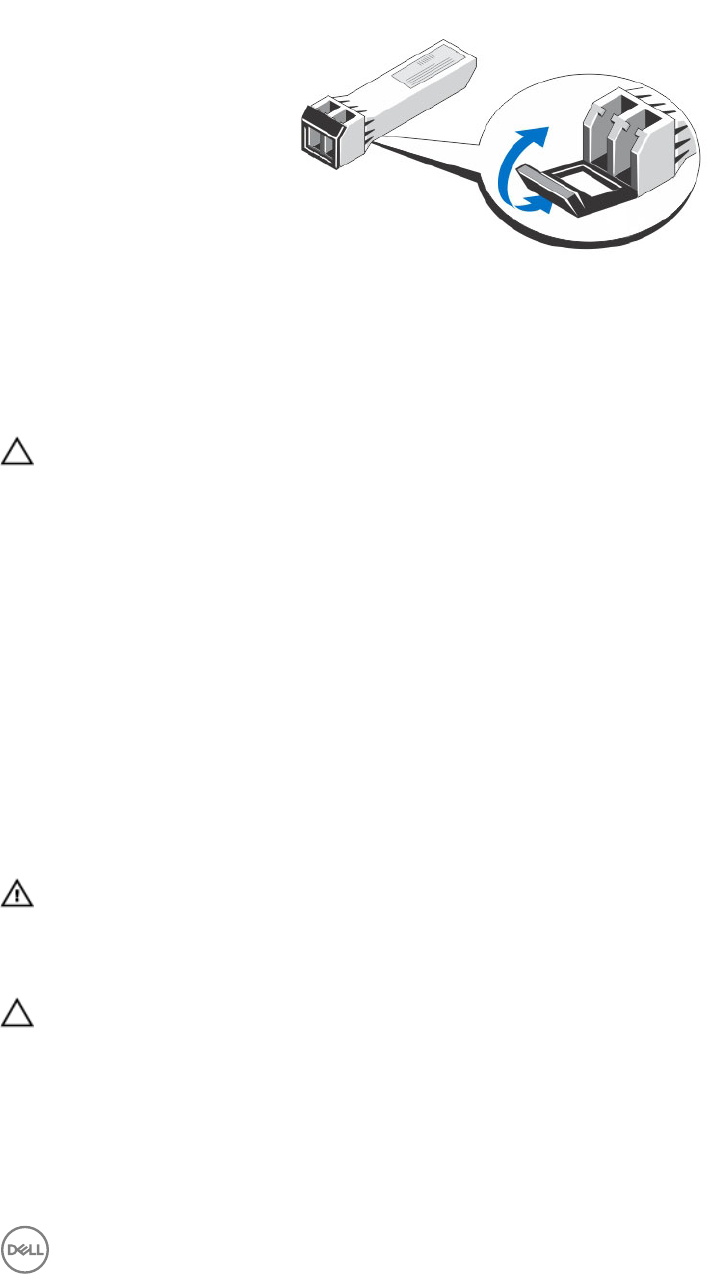

Install an SFP+ Transceiver Module

Use the following procedure to install an SFP+ transceiver module into a storage controller.

About this task

Read the following cautions and information before installing an SFP+ transceiver module.

WARNING: To reduce the risk of injury from laser radiation or damage to the equipment, take the following precautions:

• Do not open any panels, operate controls, make adjustments, or perform procedures to a laser device other than those

specied in this document.

• Do not stare into the laser beam.

CAUTION: Transceiver modules can be damaged by electrostatic discharge (ESD). To prevent ESD damage to the

transceiver module, take the following precautions:

• Wear an antistatic discharge strap while handling transceiver modules.

• Place transceiver modules in antistatic packing material when transporting or storing them.

Steps

1. Position the transceiver module so that the key is oriented correctly to the port in the storage controller.

About the SCv3000 and SCv3020 Storage System 13

Figure 5. Install the SFP+ Transceiver Module

1. SFP+ transceiver module 2. Fiber-optic cable connector

2. Insert the transceiver module into the port until it is rmly seated and the latching mechanism clicks.

The transceiver modules are keyed so that they can be inserted only with the correct orientation. If a transceiver module does

not slide in easily, ensure that it is correctly oriented.

CAUTION: To reduce the risk of damage to the equipment, do not use excessive force when inserting the transceiver

module.

3. Position the ber-optic cable so that the key (the ridge on one side of the cable connector) is aligned with the slot in the

transceiver module.

CAUTION: Touching the end of a ber-optic cable damages the cable. Whenever a ber-optic cable is not

connected, replace the protective covers on the ends of the cable.

4. Insert the ber-optic cable into the transceiver module until the latching mechanism clicks.

Remove an SFP+ Transceiver Module

Complete the following steps to remove an SFP+ transceiver module from a storage controller.

Prerequisite

Use failover testing to make sure that the connection between host servers and the Storage Center remains up if the port is

disconnected.

About this task

Read the following cautions and information before beginning the removal and replacement procedures.

WARNING: To reduce the risk of injury from laser radiation or damage to the equipment, take the following precautions:

• Do not open any panels, operate controls, make adjustments, or perform procedures to a laser device other than those

specied in this document.

• Do not stare into the laser beam.

CAUTION: Transceiver modules can be damaged by electrostatic discharge (ESD). To prevent ESD damage to the

transceiver module, take the following precautions:

• Wear an antistatic discharge strap while handling modules.

• Place modules in antistatic packing material when transporting or storing them.

Steps

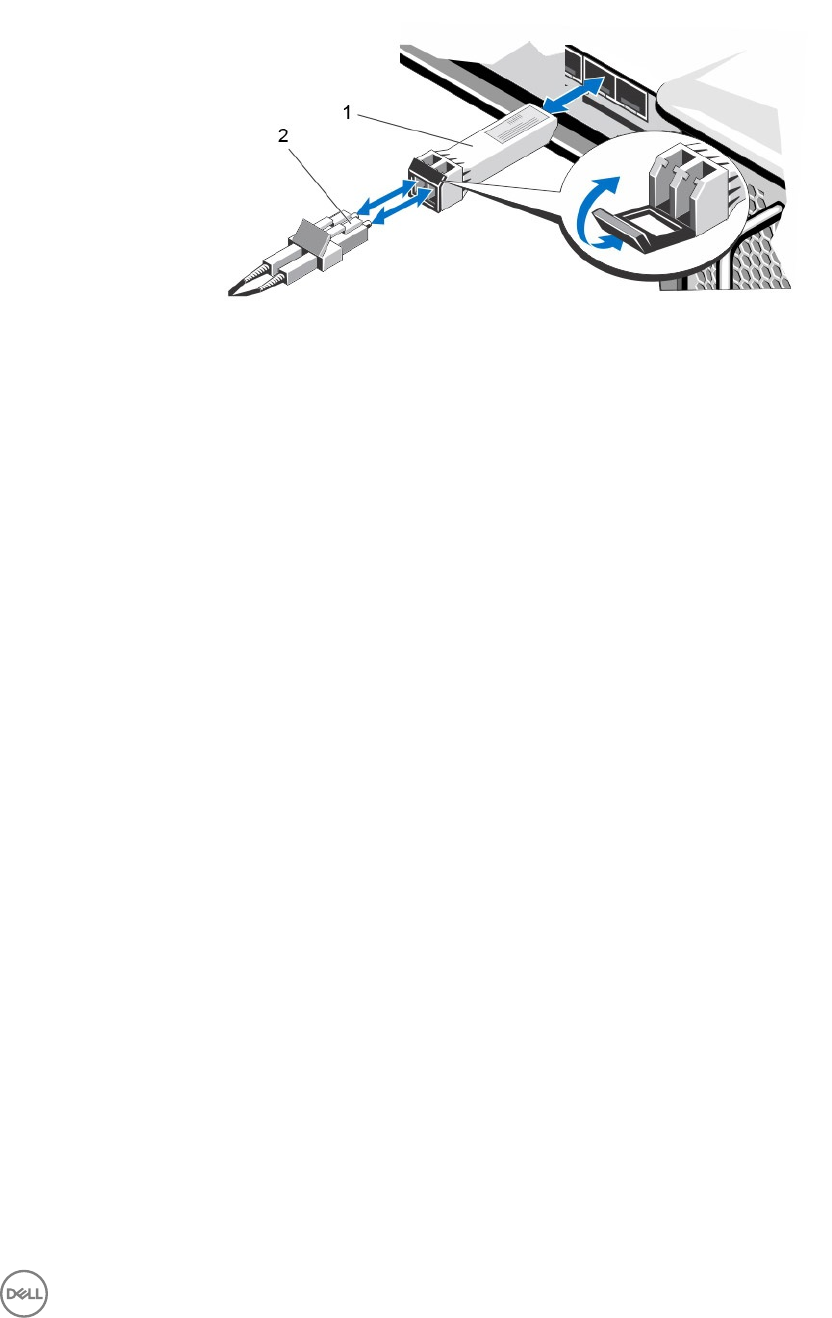

1. Remove the ber-optic cable that is inserted into the transceiver.

a. Make sure the ber-optic cable is labeled before removing it.

b. Press the release clip on the bottom of the cable connector to remove the ber-optic cable from the transceiver.

CAUTION: Touching the end of a ber-optic cable damages the cable. Whenever a ber-optic cable is not

connected, replace the protective covers on the ends of the cables.

2. Open the transceiver module latching mechanism.

14 About the SCv3000 and SCv3020 Storage System

3. Grasp the bail clasp latch on the transceiver module and pull the latch out and down to eject the transceiver module from the

socket.

4. Slide the transceiver module out of the port.

Figure 6. Remove the SFP+ Transceiver Module

1. SFP+ transceiver module 2. Fiber-optic cable connector

Back-End Connectivity

Back-end connectivity is strictly between the storage system and expansion enclosures.

The SCv3000 and SCv3020 storage system supports back-end connectivity to multiple SCv300, SCv320, and SCv360 expansion

enclosures.

System Administration

To perform system administration, the Storage Center communicates with computers using the Ethernet management (MGMT)

port on the storage controllers.

The Ethernet management port is used for Storage Center conguration, administration, and management.

Storage Center Replication

Storage Center sites can be collocated or remotely connected and data can be replicated between sites. Storage Center replication

can duplicate volume data to another site in support of a disaster recovery plan or to provide local access to a remote data volume.

Typically, data is replicated remotely as part of an overall disaster avoidance or recovery plan.

The SCv3000 and SCv3020 supports replication to other SCv3000/SCv3020, SC5020, SC7020, SC8000, SC9000, and SC4020

storage systems. However, a Storage Manager Data Collector must be used to replicate data between the storage systems. For

more information about installing and managing the Data Collector, and setting up replications, see the Storage Manager

Administrator’s Guide.

SCv3000 and SCv3020 Storage System Hardware

The SCv3000 and SCv3020 storage system ships with Dell Enterprise Plus Value drives, two redundant power supply/cooling fan

modules, and two redundant storage controllers.

Each storage controller contains the front-end, back-end, and management communication ports of the storage system.

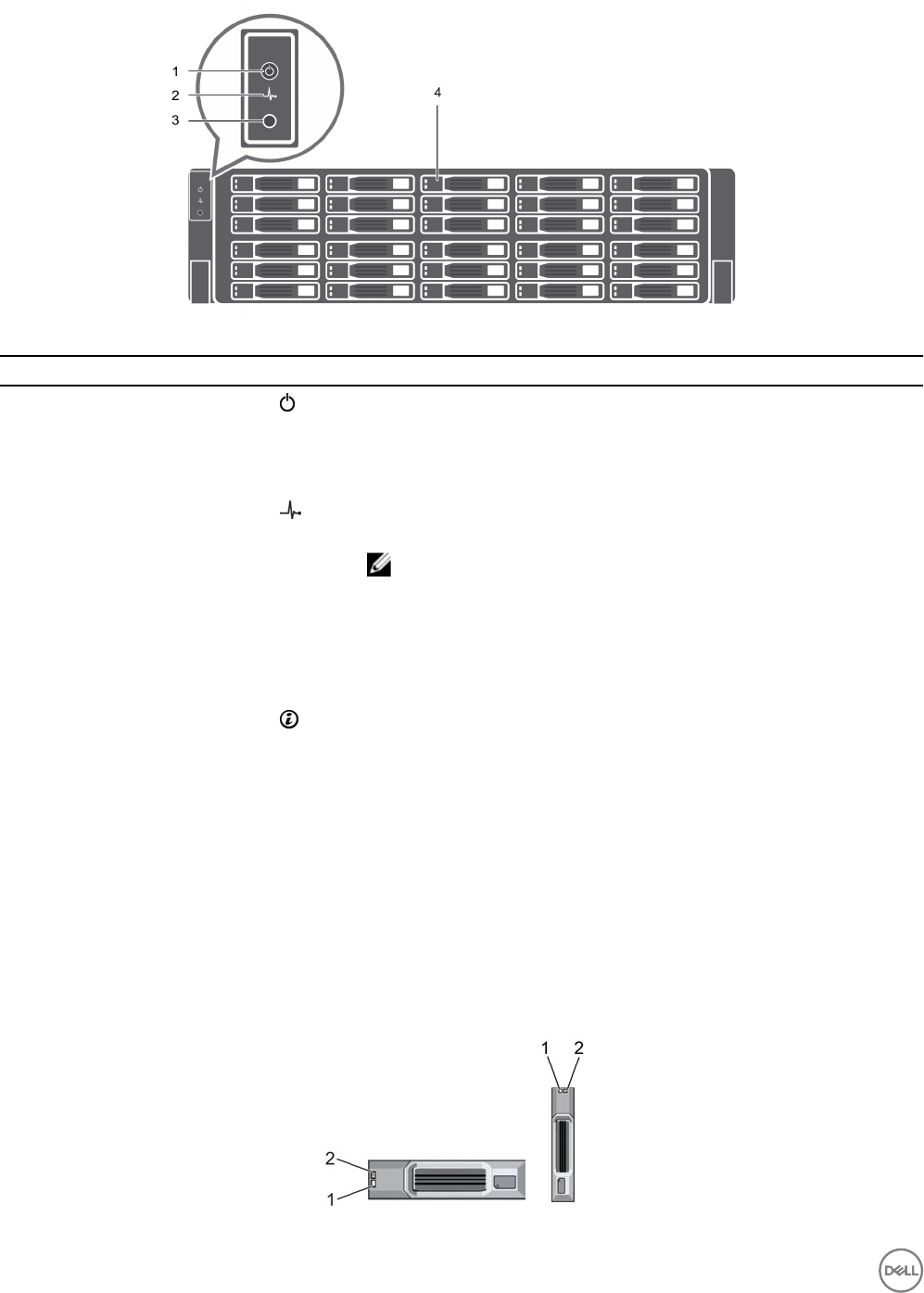

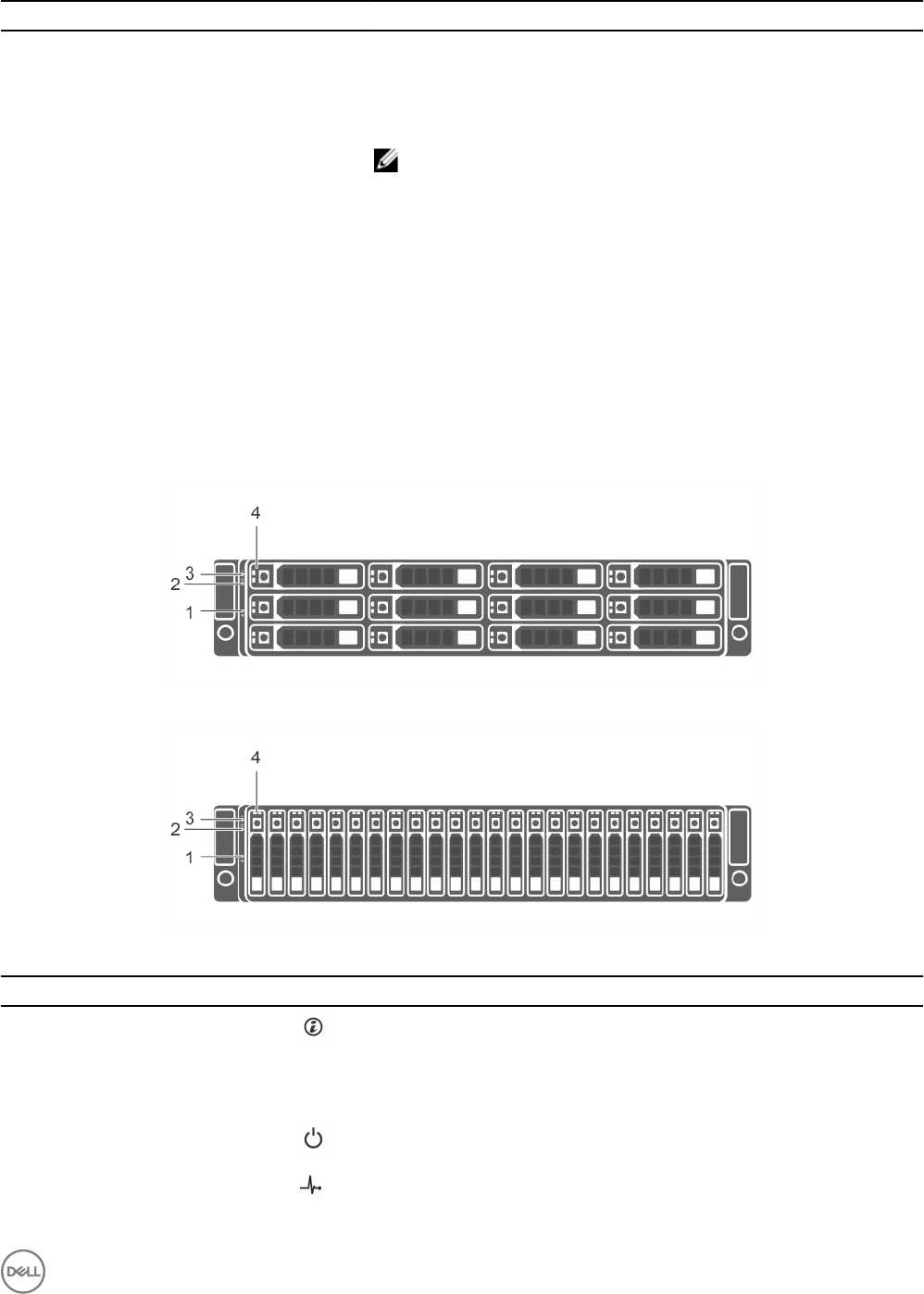

SCv3000 and SCv3020 Storage System Front-Panel View

The front panel of the storage system contains power and status indicators, and a system identication button.

In addition, the hard drives are installed and removed through the front of the storage system chassis.

About the SCv3000 and SCv3020 Storage System 15

Figure 7. SCv3000 and SCv3020 Storage System Front-Panel View

Item Name Icon Description

1 Power indicator Lights when the storage system power is on

•O – No power

• On steady green – At least one power supply is providing power to the

storage system

2 Status indicator Lights when the startup process for both storage controllers is complete with

no faults detected.

NOTE: The startup process can take 5–10 minutes or more.

•O – One or both storage controllers are running startup routines, or a

fault has been detected during startup

• On steady blue – Both storage controllers have completed the startup

process and are in normal operation

• Blinking amber – Fault detected

3Identication button Blinking blue continuously – A user sent a command to the storage system to

make the LED blink so that the user can identify the storage system in the

rack.

• The identication LED blinks on the control panel of the chassis, to allow

users to nd the storage system when looking at the front of the rack.

• The identication LEDs on the storage controllers also blink, which allows

users to nd the storage system when looking at the back of the rack.

4 Hard drives — Can have up to 30 internal 2.5-inch SAS hard drives

SCv3000 and SCv3020 Storage System Drives

The SCv3000 and SCv3020 storage system supports Dell Enterprise Plus Value drives.

The drives in an SCv3000 storage system are installed horizontally. The drives in an SCv3020 storage system are installed vertically.

The indicators on the drives provide status and activity information.

Figure 8. SCv300 and SCv320 Expansion Enclosure Drive Indicators

16 About the SCv3000 and SCv3020 Storage System

Item Control/Feature Indicator Code

1 Drive activity indicator • Blinking green – Drive has I/O activity

• Steady green – Drive is detected and has no faults

2 Drive status indicator • Steady green – Normal operation

• Blinking green – A command was sent by Dell Storage Manager to the drive to make

the LED blink so that users can identify the drive in the rack.

• Blinking amber – Hardware or rmware fault

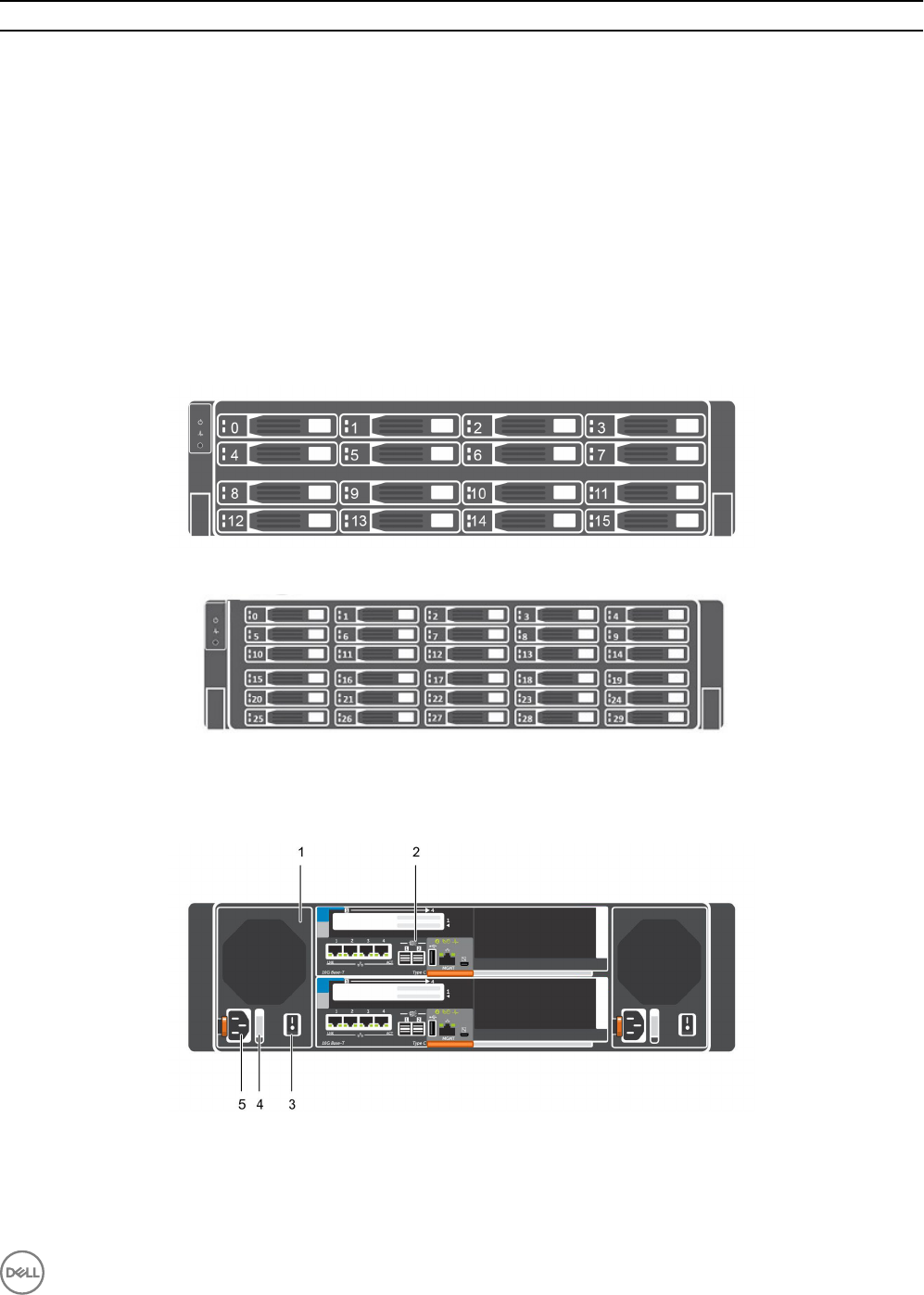

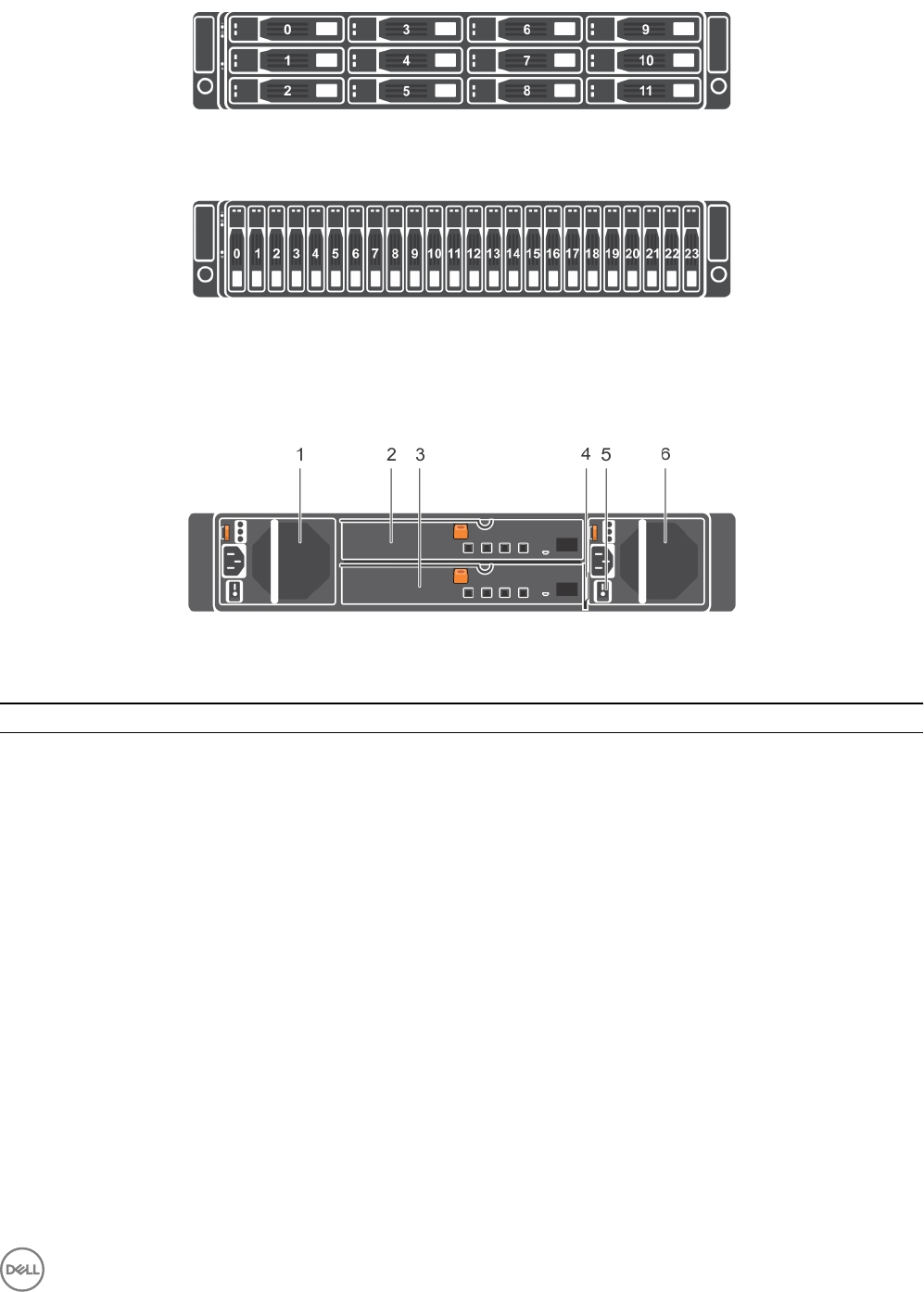

SCv3000 and SCv3020 Storage System Drive Numbering

The storage system holds up to 16 or 30 drives, which are numbered from left to right in rows starting from 0 at the top-left drive.

Drive numbers increment from left to right, and then top to bottom such that the rst row of drives is numbered from 0 to 4 from

left to right, and the second row of drives is numbered from 5 to 9 from left to right.

Dell Storage Manager identies drives as XX-YY, where XX is the number of the unit ID of the storage system and YY is the drive

position inside the storage system.

Figure 9. SCv3000 Storage System Drive Numbering

Figure 10. SCv3020 Storage System Drive Numbering

SCv3000 and SCv3020 Storage System Back-Panel View

The back panel of the storage system contains the storage controller indicators and power supply indicators.

Figure 11. SCv3000 and SCv3020 Storage System Back-Panel View

About the SCv3000 and SCv3020 Storage System 17

Item Name Icon Description

1 Power supply/cooling fan

module (2)

Contains a 1485 W power supply and fans that provide cooling for the storage

system, with AC input to the power supply of 200–240 V. In Dell Storage

Manager, the power supply/cooling fan module on the left side of the back

panel is Power Supply 1 and power supply/cooling fan module on the right side

of the back panel is Power Supply 2.

2 Storage controller (2) — Each storage controller contains:

• Optional 10 GbE iSCSI mezzanine card with four SFP+ ports or four RJ45

10GBASE-T ports

• One expansion slot for a front-end I/O card:

– Fibre Channel

– iSCSI

– SAS

• SAS expansion ports – Two 12 Gbps SAS ports for back-end connectivity

to expansion enclosures

• USB port – Single USB 2.0 port

• MGMT port – Embedded Ethernet port for system management

• Serial port – Micro-USB serial port used for an alternative initial

conguration and support-only functions

3 Power switch (2) — Controls power for the storage system. Each power supply/cooling fan module

has one power switch.

4 Power supply/cooling fan

module LED handle

—The handle of the power supply/cooling fan module indicates the DC power

status of the power supply and the fans.

• Not lit – No power

• Solid green – Power supply has valid power source and is operational

• Blinking amber – Error condition in the power supply

• Blinking green – Firmware is being updated.

• Blinking green then o – Power supply mismatch

5 Power socket (2) — Accepts the following standard computer power cords:

• IEC320-C13 for deployments worldwide

• IEC60320-C19 for deployments in Japan

Power Supply and Cooling Fan Modules

The SCv3000 and SCv3020 storage system supports two hot-swappable power supply/cooling fan modules.

The cooling fans and the power supplies are integrated into the power supply/cooling fan module and cannot be replaced separately.

If one power supply/cooling fan module fails, the second module continues to provide power to the storage system.

NOTE: When a power supply/cooling fan module fails, the cooling fan speed in the remaining module increases

signicantly to provide adequate cooling. The cooling fan speed decreases gradually when a new power supply/cooling

fan module is installed.

CAUTION: A single power supply/cooling fan module can be removed from a powered on storage system for no more

than 90 seconds. If a power supply/cooling fan module is removed for longer than 90 seconds, the storage system might

shut down automatically to prevent damage.

18 About the SCv3000 and SCv3020 Storage System

SCv3000 and SCv3020 Storage Controller Features and Indicators

The SCv3000 and SCv3020 storage system includes two storage controllers in two interface slots.

SCv3000 and SCv3020 Storage Controller

The following gure shows the features and indicators on the storage controller.

Figure 12. SCv3000 and SCv3020 Storage Controller

Item Control/Feature Icon Description

1 I/O card slot Fibre Channel I/O card – Ports are numbered 1 to 4 from left to right

• The LEDs on the 16 Gb Fibre Channel ports have the following meanings:

– All o – No power

– All on – Booting up

– Blinking amber – 4 Gbps activity

– Blinking green – 8 Gbps activity

– Blinking yellow – 16 Gbps activity

– Blinking amber and yellow – Beacon

– All blinking (simultaneous) – Firmware initialized

– All blinking (alternating) – Firmware fault

• The LEDs on the 32 Gb Fibre Channel ports have the following meanings:

– All o – No power

– All on – Booting up

– Blinking amber – 8 Gbps activity

– Blinking green – 16 Gbps activity

– Blinking yellow – 32 Gbps activity

– Blinking amber and yellow – Beacon

– All blinking (simultaneous) – Firmware initialized

– All blinking (alternating) – Firmware fault

iSCSI I/O card – Ports are numbered 1 to 4 from left to right

NOTE: The iSCSI I/O card supports Data Center Bridging (DCB), but

the mezzanine card does not support DCB.

• The LEDs on the iSCSI ports have the following meanings:

–O – No power

– Steady Amber – Link

– Blinking Green – Activity

About the SCv3000 and SCv3020 Storage System 19

Item Control/Feature Icon Description

SAS I/O card – Ports are numbered 1 to 4 from left to right

The SAS ports on SAS I/O cards do not have LEDs.

2Identication LED Blinking blue continuously – A command was sent by Dell Storage Manager to

the storage system to make the LED blink so that users can identify the

storage system in the rack.

The identication LED blinks on the control panel of the chassis, which allows

users to nd the storage system when looking at the front of the rack.

The identication LEDs on the storage controllers also blink, which allows

users to nd the storage system when looking at the back of the rack.

3 Cache to Flash (C2F) •O – Running normally

• Blinking green – Running on battery (shutting down)

4 Health status •O – Unpowered

• Blinking amber

– Slow blinking amber (2s on, 1s o) – Controller hardware fault was

detected. Use Dell Storage Manager to view specic details about the

hardware fault.

– Fast blinking amber (4x per second) – Power good and the pre-

operating system is booting

• Blinking green

– Slow blinking green (2s on, 1s o) – Operating system is booting

– Blinking green (1s on, 1s o) – System is in safe mode

– Fast blinking green (4x per second) – Firmware is updating

• Solid green – Running normal operation

5 Serial port (micro USB) Used under the supervision of Dell Technical Support to troubleshoot and

support systems.

6 MGMT port — Ethernet port used for storage system management and access to Dell

Storage Manager.

Two LEDs with the port indicate link status (left LED) and activity status

(right LED):

• Link and activity indicators are o – Not connected to the network

• Link indicator is green – The NIC is connected to a valid network at its

maximum port speed.

• Link indicator is amber – The NIC is connected to a valid network at less

than its maximum port speed.

• Activity indicator is blinking green – Network data is being sent or

received.

7 USB port One USB 2.0 connector that is used for SupportAssist diagnostic les when

the storage system is not connected to the Internet.

8 Mini-SAS (ports 1 and 2) Back-end expansion ports 1 and 2. LEDs with the ports indicate connectivity

information between the storage controller and the expansion enclosure:

• Steady green indicates the SAS connection is working properly.

• Steady yellow indicates the SAS connection is not working properly.

9 Mezzanine card The iSCSI ports on the mezzanine card are either 10 GbE SFP+ ports or 1

GbE/10 GbE RJ45 ports.

The LEDs on the iSCSI ports have the following meanings:

20 About the SCv3000 and SCv3020 Storage System

Item Control/Feature Icon Description

•O – No connectivity

• Steady green, left LED – Link (full speed)

• Steady amber, left LED – Link (degraded speed)

• Blinking green, right LED – Activity

NOTE: The mezzanine card does not support DCB.

Expansion Enclosure Overview

Expansion enclosures allow the data storage capabilities of the SCv3000 and SCv3020 storage system to be expanded beyond the

30 internal drives in the storage system chassis.

• The SCv300 is a 2U expansion enclosure that supports up to 12 3.5‐inch hard drives installed in a four‐column, three-row

conguration.

• The SCv320 is a 2U expansion enclosure that supports up to 24 2.5‐inch hard drives installed vertically side by side.

• The SCv360 is a 4U expansion enclosure that supports up to 60 3.5‐inch hard drives installed in a twelve‐column, ve-row

conguration.

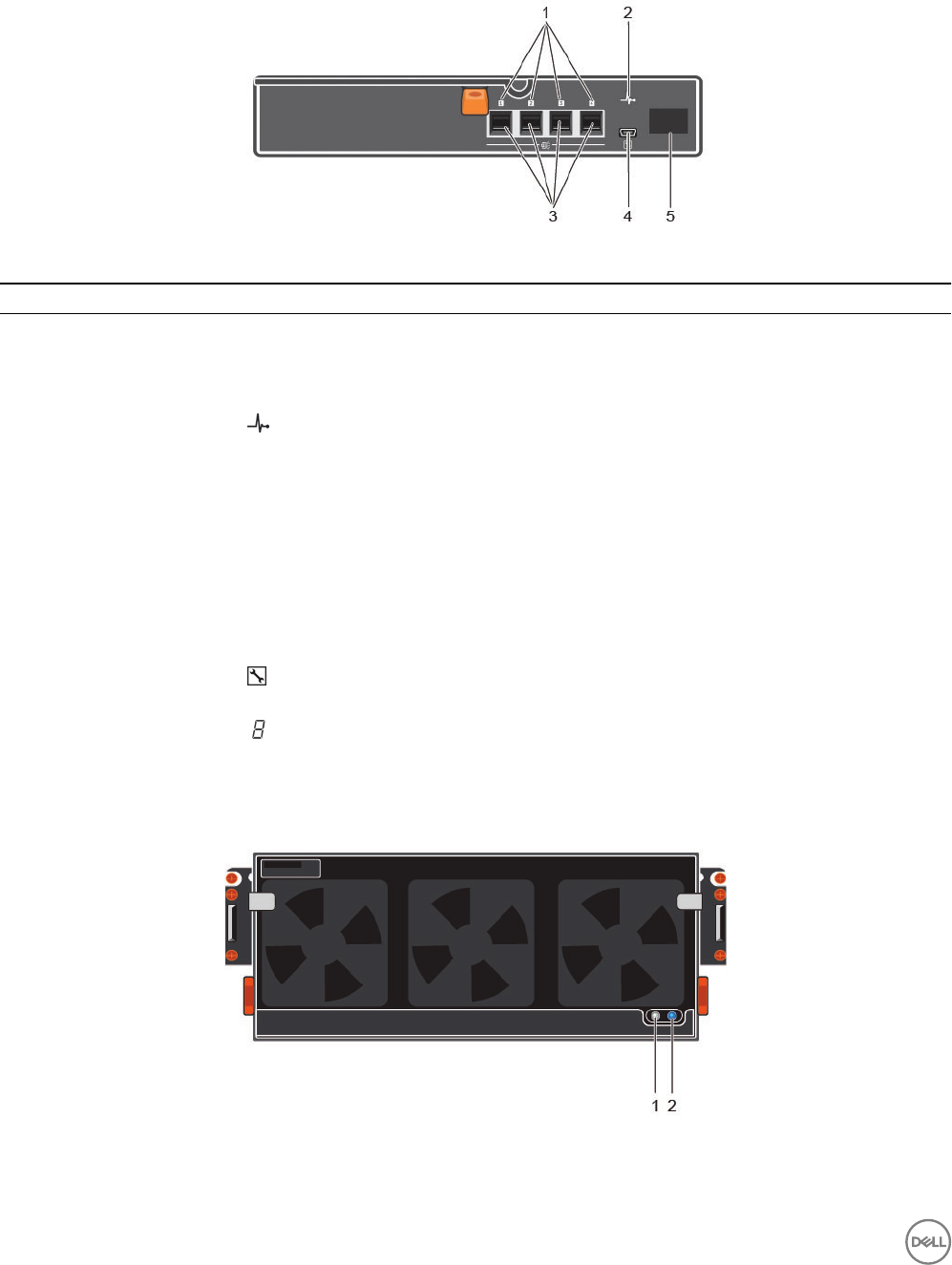

SCv300 and SCv320 Expansion Enclosure Front-Panel Features and Indicators

The front panel shows the expansion enclosure status and power supply status.

Figure 13. SCv300 Front-Panel Features and Indicators

Figure 14. SCv320 Front-Panel Features and Indicators

Item Name Icon Description

1 System identication

button

The system identication button on the front control panel can be used to

locate a particular expansion enclosure within a rack. When the button is

pressed, the system status indicators on the control panel and the

Enclosure Management Module (EMM) blink blue until the button is

pressed again.

2 Power LED The power LED lights when at least one power supply unit is supplying

power to the expansion enclosure.

3 Expansion enclosure status

LED

The expansion enclosure status LED lights when the expansion enclosure

power is on.

About the SCv3000 and SCv3020 Storage System 21

Item Name Icon Description

• Solid blue during normal operation.

• Blinks blue when a host server is identifying the expansion enclosure or

when the system identication button is pressed.

• Blinks amber or remains solid amber for a few seconds and then turns

o when the EMMs are starting or resetting.

• Blinks amber for an extended time when the expansion enclosure is in

a warning state.

• Remains solid amber when the expansion enclosure is in the fault state.

4 Hard disk drives • SCv300 – Up to 12 3.5-inch SAS hot-swappable hard disk drives.

• SCv320 – Up to 24 2.5-inch SAS hot-swappable hard disk drives.

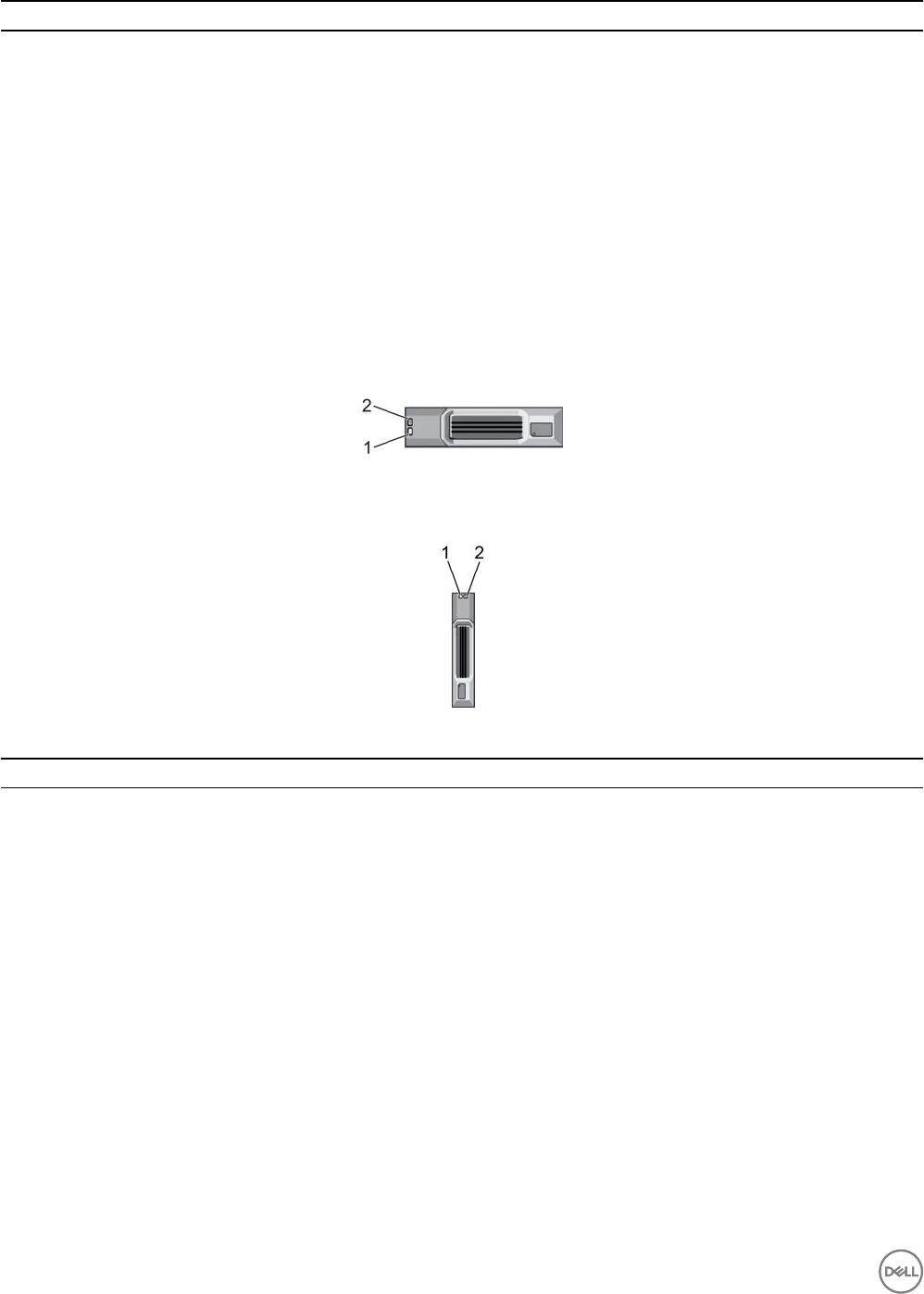

SCv300 and SCv320 Expansion Enclosure Drives

Dell Enterprise Plus Value drives are the only drives that can be installed in SCv300 and SCv320 expansion enclosures. If a non-Dell

Enterprise Plus Valuedrive is installed, the Storage Center prevents the drive from being managed.

The drives in an SCv300 expansion enclosure are installed horizontally.

Figure 15. SCv300 Expansion Enclosure Drive Indicators

The drives in an SCv320 expansion enclosure are installed vertically.

Figure 16. SCv320 Expansion Enclosure Drive Indicators

Item Name Indicator Code

1 Drive activity indicator • Blinking green – Drive activity

• Steady green – Drive is detected and has no faults

2 Drive status indicator • Steady green – Normal operation

• Blinking green (on 1 sec. / o 1 sec.) – Drive identication is enabled

• Steady amber – Drive is safe to remove

•O – No power to the drive

SCv300 and SCv320 Expansion Enclosure Drive Numbering

The Storage Center identies drives as XX-YY, where XX is the unit ID of the expansion enclosure that contains the drive, and YY is

the drive position inside the expansion enclosure.

An SCv300 holds up to 12 drives, which are numbered from left to right in rows starting from 0.

22 About the SCv3000 and SCv3020 Storage System

Figure 17. SCv300 Drive Numbering

An SCv320 holds up to 24 drives, which are numbered from left to right starting from 0. A

Figure 18. SCv320 Drive Numbering

SCv300 and SCv320 Expansion Enclosure Back-Panel Features and Indicators

The back panel provides controls to power up and reset the expansion enclosure, indicators to show the expansion enclosure status,

and connections for back-end cabling.

Figure 19. SCv300 and SCv320 Expansion Enclosure Back Panel Features and Indicators

Item Name Description

1 Power supply unit and cooling fan

module (PS1)

600 W power supply

2 Enclosure management module

(EMM 0)

The EMM provides a data path between the expansion enclosure and the

storage controllers. The EMM also provides the management functions for

the expansion enclosure.

3 Enclosure management module

(EMM 1)

The EMM provides a data path between the expansion enclosure and the

storage controllers. The EMM also provides the management functions for

the expansion enclosure.

4 Information tag A slide-out label panel that records system information such as the Service

Tag

5 Power switches (2) Controls power for the expansion enclosure. There is one switch for each

power supply.

6 Power supply unit and cooling fan

module (PS2)

600 W power supply

About the SCv3000 and SCv3020 Storage System 23

SCv300 and SCv320 Expansion Enclosure EMM Features and Indicators

The SCv300 and SCv320 expansion enclosure includes two enclosure management modules (EMMs) in two interface slots.

Figure 20. SCv300 and SCv320 Expansion Enclosure EMM Features and Indicators

Item Name Icon Description

1 SAS port status

(1–4)

• Green – All the links to the port are connected

• Amber – One or more links are not connected

•O – Expansion enclosure is not connected

2 EMM status

indicator

• On steady green – Normal operation

• Amber – Expansion enclosure did not boot or is not properly congured

• Blinking green – Automatic update in progress

• Blinking amber two times per sequence – Expansion enclosure is unable to

communicate with other expansion enclosures

• Blinking amber (four times per sequence) – Firmware update failed

• Blinking amber (ve times per sequence) – Firmware versions are dierent

between the two EMMs

3 SAS ports 1–4

(Input or Output)

Provides SAS connections for cabling the storage controller to the next expansion

enclosure in the chain. (single port, redundant, and multichain conguration).

4 USB Mini-B (serial

debug port)

Not for customer use

5 Unit ID display Displays the expansion enclosure ID

SCv360 Expansion Enclosure Front-Panel Features and Indicators

The SCv360 front panel shows the expansion enclosure status and power supply status.

Figure 21. SCv360 Front-Panel Features and Indicators

24 About the SCv3000 and SCv3020 Storage System

Item Name Description

1 Power LED The power LED lights when at least one power supply unit is supplying power to the

expansion enclosure.

2 Expansion enclosure

status LED

The expansion enclosure status LED indicates when the system is being identied or when

the expansion enclosure is in the fault state.

•O during normal operation.

• Blinks blue when a host server is identifying the expansion enclosure or when the

system identication button is pressed.

• Remains solid blue when the expansion enclosure is in the fault state.

SCv360 Expansion Enclosure Drives

Dell Enterprise Plus drives are the only drives that can be installed in SCv360 expansion enclosures. If a non-Dell Enterprise Plus

drive is installed, the Storage Center prevents the drive from being managed.

The drives in an SCv360 expansion enclosure are installed horizontally.

Figure 22. SCv360 Drive Indicators

Item Name Indicator Code

1 Drive activity indicator • Blinking blue – Drive activity

• Steady blue – Drive is detected and has no faults

2 Drive status indicator •O – Normal operation

• Blinking amber (on 1 sec. / o 1 sec.) – Drive identication is enabled

• Steady amber – Drive has a fault

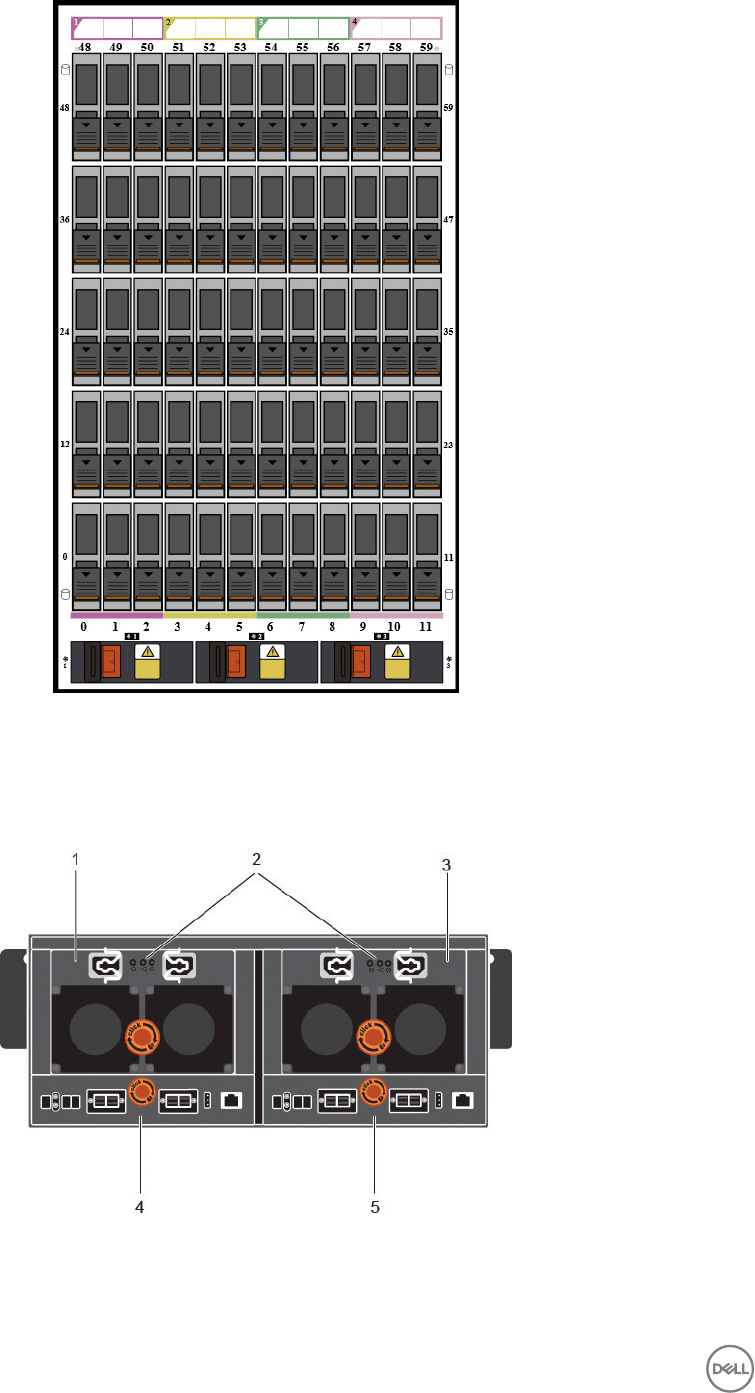

SCv360 Expansion Enclosure Drive Numbering

The Storage Center identies drives as XX-YY, where XX is the unit ID of the expansion enclosure that contains the drive, and YY is

the drive position inside the expansion enclosure.

An SCv360 holds up to 60 drives, which are numbered from left to right in rows starting from 0.

About the SCv3000 and SCv3020 Storage System 25

Figure 23. SCv360 Drive Numbering

SCv360 Expansion Enclosure Back Panel Features and Indicators

The SCv360 back panel provides controls to power up and reset the expansion enclosure, indicators to show the expansion

enclosure status, and connections for back-end cabling.

Figure 24. SCv360 Back Panel Features and Indicators

26 About the SCv3000 and SCv3020 Storage System

Item Name Description

1 Power supply unit and

cooling fan module (PS1)

Contains redundant 900 W power supplies and fans that provide cooling for the expansion

enclosure.

2 Power supply indicators

• AC power indicator

for power supply 1

• Power supply/cooling

fan indicator

• AC power indicator

for power supply 2

AC power indicators:

•Green: Normal operation. The power supply module is supplying AC power to the

expansion enclosure

•O: Power switch is o, the power supply is not connected to AC power, or has a fault

condition

•Flashing Green: AC power is applied but is out of spec.

Power supply/cooling fan indicator:

•Amber: Power supply/cooling fan fault is detected

•O: Normal operation

3 Power supply unit and

cooling fan module (PS2)

Contains redundant 900 W power supplies and fans that provide cooling for the expansion

enclosure.

4 Enclosure management

module 1

EMMs provide the data path and management functions for the expansion enclosure.

5 Enclosure management

module 2

EMMs provide the data path and management functions for the expansion enclosure.

SCv360 Expansion Enclosure EMM Features and Indicators

The SCv360 includes two enclosure management modules (EMMs) in two interface slots.

Figure 25. SCv360 EMM Features and Indicators

Item Name Description

1 EMM status indicator •O – Normal operation

• Amber – fault has been detected

• Blinking amber two times per sequence – Expansion enclosure is unable to

communicate with other expansion enclosures

• Blinking amber (four times per sequence) – Firmware update failed

• Blinking amber (ve times per sequence) – Firmware versions are dierent between

the two EMMs

2 SAS port status indicator • Blue – All the links to the port are connected

• Blinking blue – One or more links are not connected

•O – Expansion enclosure is not connected

3 Unit ID display Displays the expansion enclosure ID

4 EMM power indicator • Blue – Normal operation

•O – Power is not connected

5 SAS ports 1–4 (Input or

Output)

Provides SAS connections for cabling the storage controller to the next expansion

enclosure in the chain (single port, redundant, and multichain conguration).

About the SCv3000 and SCv3020 Storage System 27

2

Install the Storage Center Hardware

This section describes how to unpack the Storage Center equipment, prepare for the installation, mount the equipment in a rack, and

install the drives.

Unpacking Storage Center Equipment

Unpack the storage system and identify the items in your shipment.

Figure 26. SCv3000 and SCv3020 Storage System Components

1. Documentation 2. Storage system

3. Rack rails 4. USB cables (2)

5. Power cables (2) 6. Front bezel

Safety Precautions

Always follow these safety precautions to avoid injury and damage to Storage Center equipment.

If equipment described in this section is used in a manner not specied by Dell, the protection provided by the equipment could be

impaired. For your safety and protection, observe the rules described in the following sections.

NOTE: See the safety and regulatory information that shipped with each Storage Center component. Warranty

information is included within this document or as a separate document.

Installation Safety Precautions

Follow these safety precautions:

• Dell recommends that only individuals with rack-mounting experience install the storage system in a rack.

• Make sure the storage system is always fully grounded to prevent damage from electrostatic discharge.

28 Install the Storage Center Hardware

• When handling the storage system hardware, use an electrostatic wrist guard (not included) or a similar form of protection.

The chassis must be mounted in a rack. The following safety requirements must be considered when the chassis is being mounted:

• The rack construction must be capable of supporting the total weight of the installed chassis. The design should incorporate

stabilizing features suitable to prevent the rack from tipping or being pushed over during installation or in normal use.

• When loading a rack with chassis, ll from the bottom up; empty from the top down.

• To avoid danger of the rack toppling over, slide only one chassis out of the rack at a time.

Electrical Safety Precautions

Always follow electrical safety precautions to avoid injury and damage to Storage Center equipment.

WARNING: Disconnect power from the storage system when removing or installing components that are not hot-

swappable. When disconnecting power, rst power down the storage system using the Storage Manager and then unplug

the power cords from all the power supplies in the storage system.

• Provide a suitable power source with electrical overload protection. All Storage Center components must be grounded before

applying power. Make sure that a safe electrical earth connection can be made to power supply cords. Check the grounding

before applying power.

• The plugs on the power supply cords are used as the main disconnect device. Make sure that the socket outlets are located near

the equipment and are easily accessible.

• Know the locations of the equipment power switches and the room's emergency power-o switch, disconnection switch, or

electrical outlet.

• Do not work alone when working with high-voltage components.

• Use rubber mats specically designed as electrical insulators.

• Do not remove covers from the power supply unit. Disconnect the power connection before removing a power supply from the

storage system.

• Do not remove a faulty power supply unless you have a replacement model of the correct type ready for insertion. A faulty power

supply must be replaced with a fully operational module power supply within 24 hours.

• Unplug the storage system chassis before you move it or if you think it has become damaged in any way. When powered by

multiple AC sources, disconnect all power sources for complete isolation.

Electrostatic Discharge Precautions

Always follow electrostatic discharge (ESD) precautions to avoid injury and damage to Storage Center equipment.

Electrostatic discharge (ESD) is generated by two objects with dierent electrical charges coming into contact with each other. The

resulting electrical discharge can damage electronic components and printed circuit boards. Follow these guidelines to protect your

equipment from ESD:

• Dell recommends that you always use a static mat and static strap while working on components in the interior of the chassis.

• Observe all conventional ESD precautions when handling plug-in modules and components.

• Use a suitable ESD wrist or ankle strap.

• Avoid contact with backplane components and module connectors.

• Keep all components and printed circuit boards (PCBs) in their antistatic bags until ready for use.

General Safety Precautions

Always follow general safety precautions to avoid injury and damage to Storage Center equipment.

• Keep the area around the storage system chassis clean and free of clutter.

• Place any system components that have been removed away from the storage system chassis or on a table so that they are not

in the way of other people.

• While working on the storage system chassis, do not wear loose clothing such as neckties and unbuttoned shirt sleeves. These

items can come into contact with electrical circuits or be pulled into a cooling fan.

• Remove any jewelry or metal objects from your body. These items are excellent metal conductors that can create short circuits

and harm you if they come into contact with printed circuit boards or areas where power is present.

Install the Storage Center Hardware 29

• Do not lift the storage system chassis by the handles of the power supply units (PSUs). They are not designed to hold the

weight of the entire chassis, and the chassis cover could become bent.

• Before moving the storage system chassis, remove the PSUs to minimize weight.

• Do not remove drives until you are ready to replace them.

NOTE: To ensure proper storage system cooling, hard drive blanks must be installed in any hard drive slot that is not

occupied.

Prepare the Installation Environment

Make sure that the environment is ready for installing the Storage Center.

•Rack Space — The rack must have enough space to accommodate the storage system chassis, expansion enclosures, and

switches.

•Power — Power must be available in the rack, and the power delivery system must meet the requirements of the Storage

Center. AC input to the power supply is 200–240 V.

•Connectivity — The rack must be wired for connectivity to the management network and any networks that carry front-end

I/O from the Storage Center to servers.

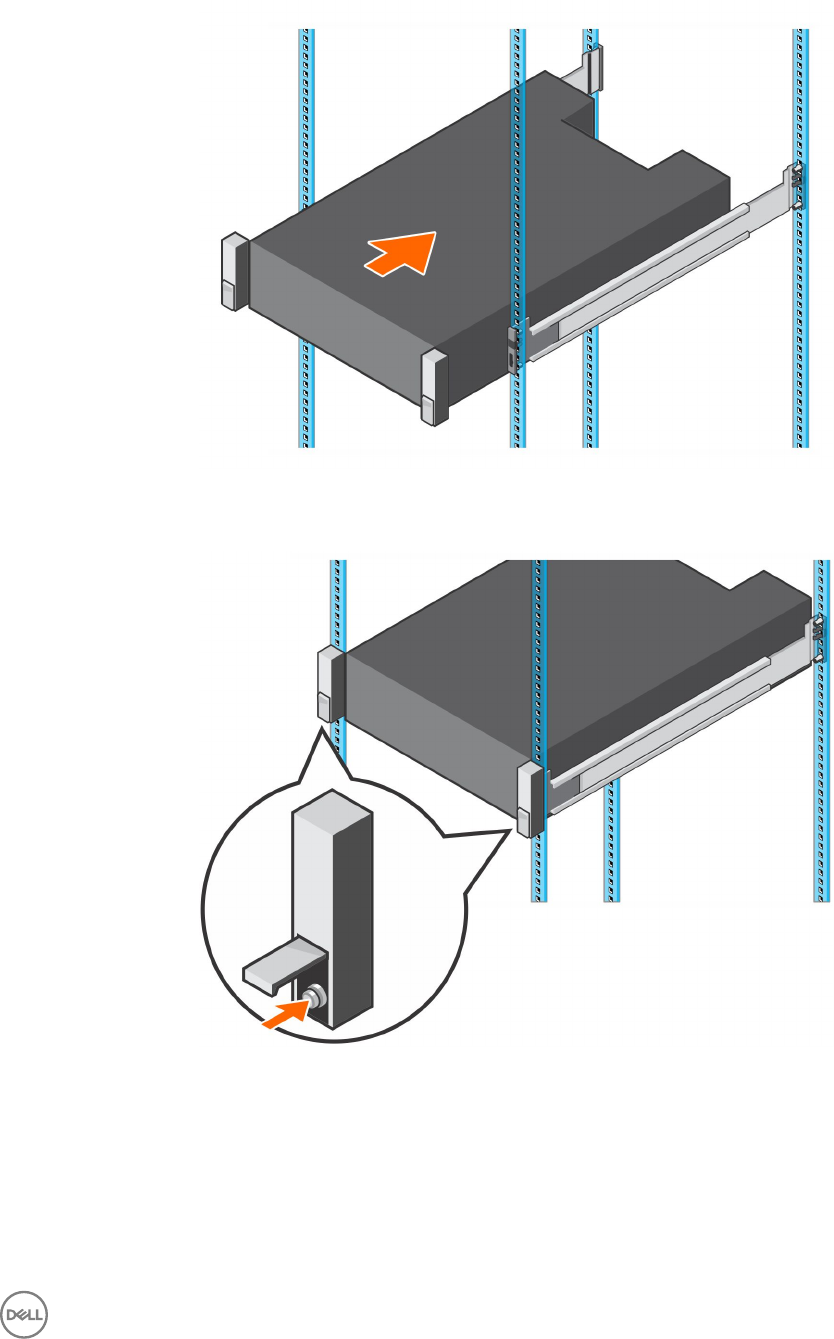

Install the Storage System in a Rack

Install the storage system and other Storage Center system components in a rack.

About this task

Mount the storage system and expansion enclosures in a manner that allows for expansion in the rack and prevents the rack from

becoming top‐heavy.

The SCv3000 and SCv3020 storage system ships with a ReadyRails II kit. The rails come in two dierent styles: tool-less and tooled.

Follow the detailed installation instructions located in the rail kit box for your particular style of rails.

NOTE: Dell recommends using two people to install the rails, one at the front of the rack and one at the back.

Steps

1. Position the left and right rail end pieces labeled FRONT facing inward.

2. Align each end piece with the top and bottom holes of the appropriate U space.

Figure 27. Attach the Rails to the Rack

3. Engage the back end of the rail until it fully seats and the latch locks into place.

30 Install the Storage Center Hardware

4. Engage the front end of the rail until it fully seats and the latch locks into place.

5. Align the system with the rails and slide the storage system into the rack.

Figure 28. Slide the Storage System Onto the Rails

6. Lift the latches on each side of the front panel and tighten the screws to the rack.

Figure 29. Tighten the Screws

If the Storage Center system includes expansion enclosures, mount the expansion enclosures in the rack. See the instructions

included with the expansion enclosure for detailed steps.

Install the Storage Center Hardware 31

3

Connect the Front-End Cabling

Front-end cabling refers to the connections between the storage system and external devices such as host servers or another

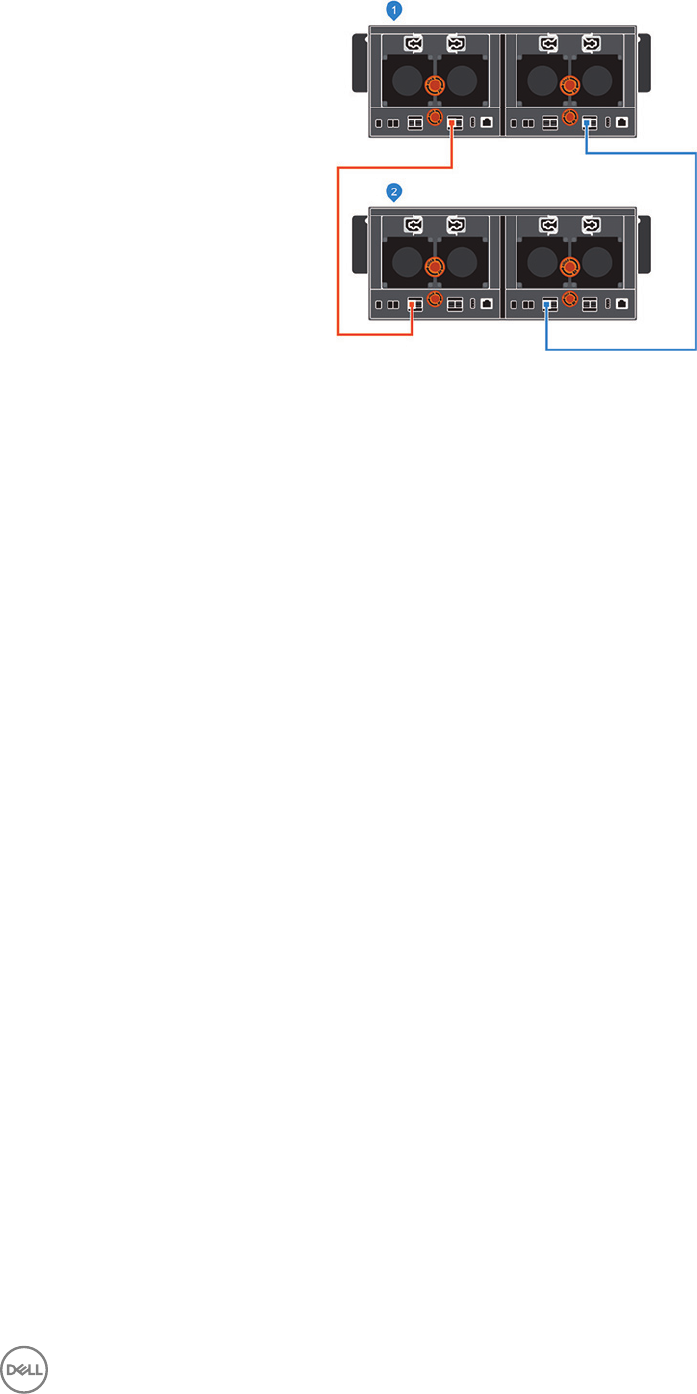

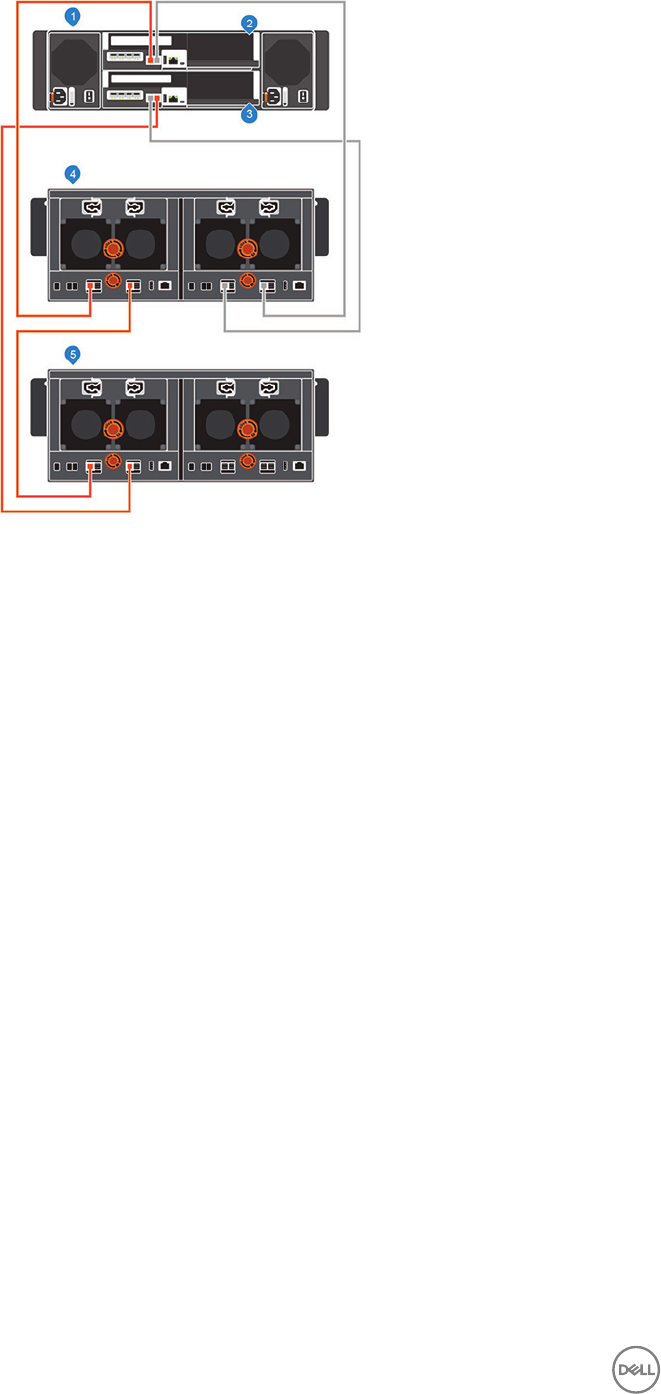

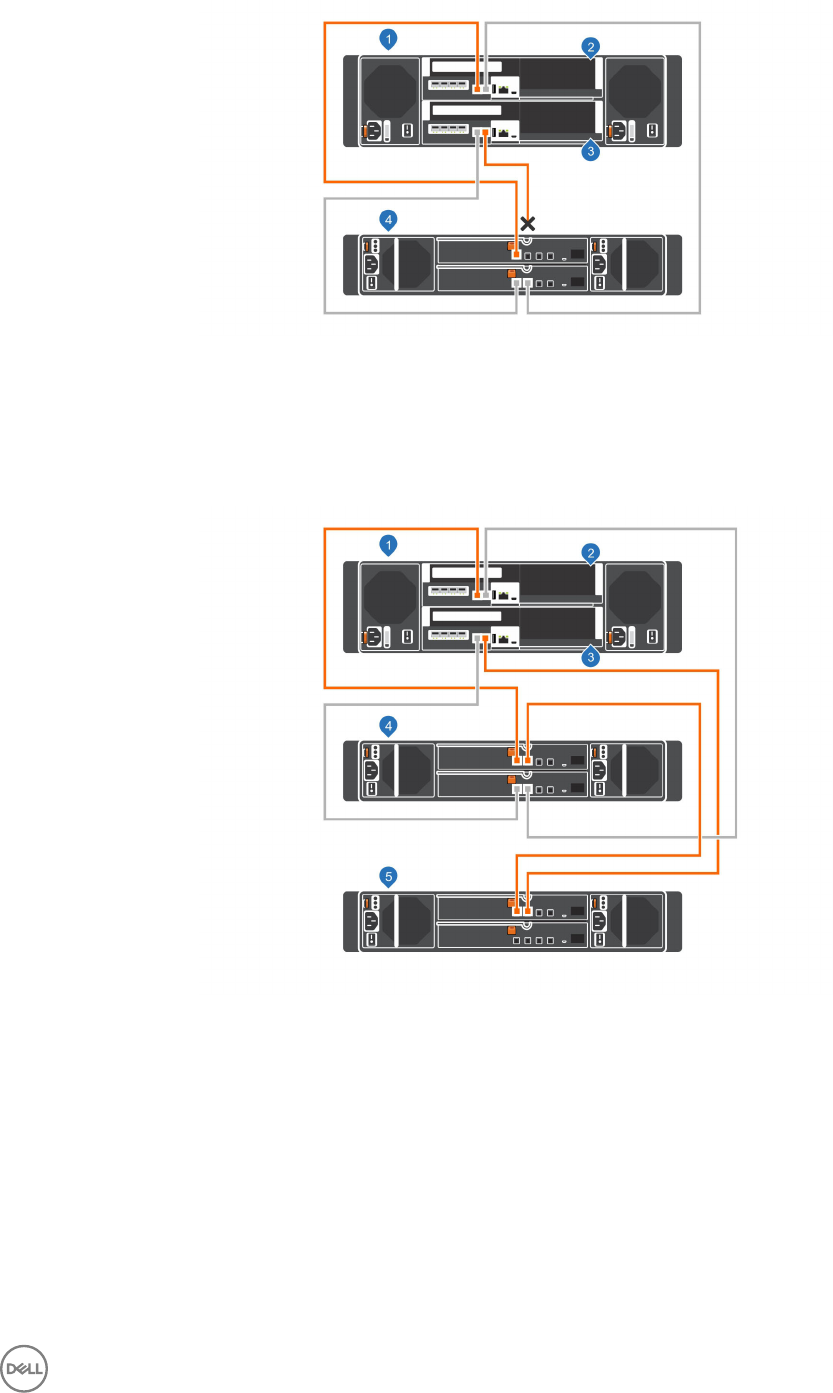

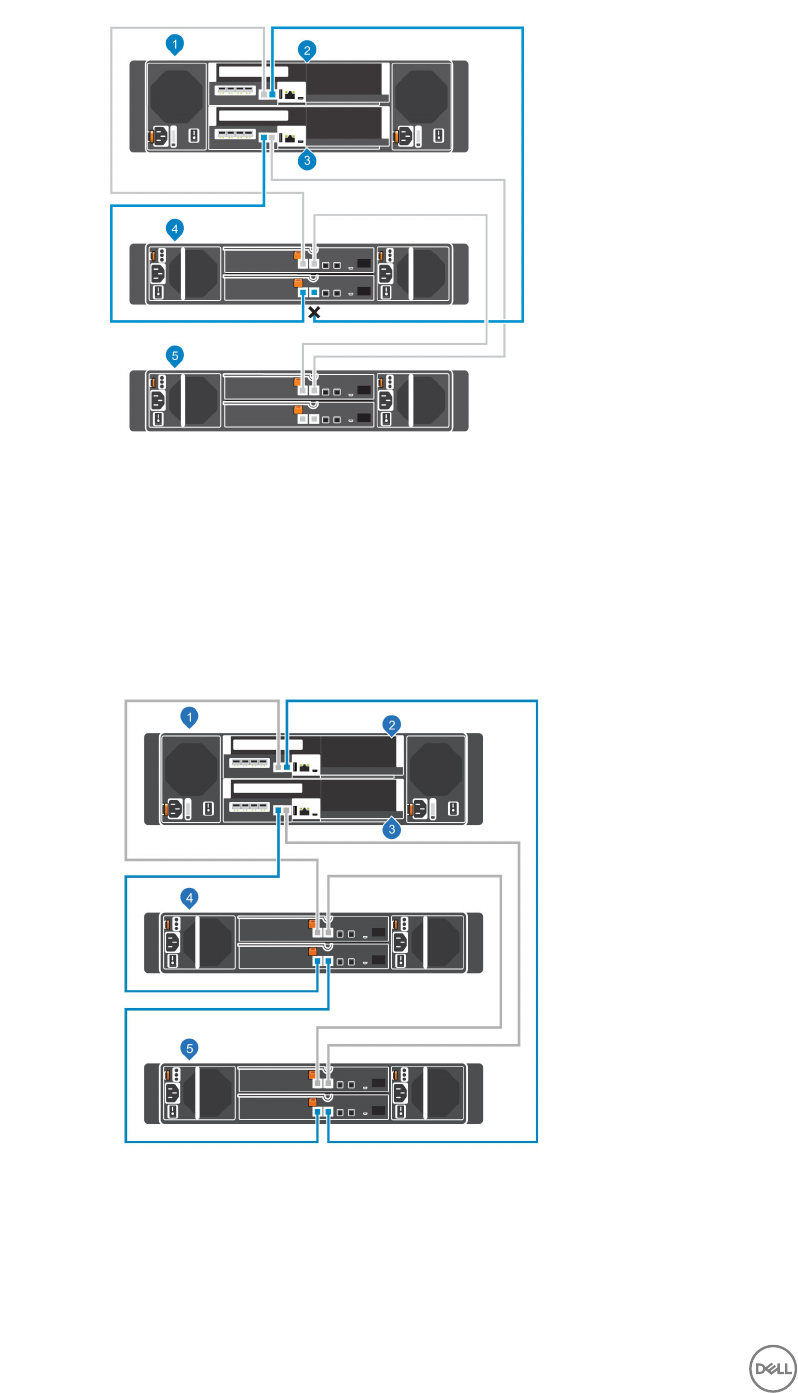

Storage Center.