The CERT Guide To Insider Threats: How Prevent, Detect, And Respond Information Technology Crimes (Theft, Sabotage, Fraud) 2012 Threats

2012-The%20CERT%20Guide%20to%20Insider%20Threats

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 430 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents

- Preface

- Acknowledgments

- Chapter 1. Overview

- True Stories of Insider Attacks

- The Expanding Complexity of Insider Threats

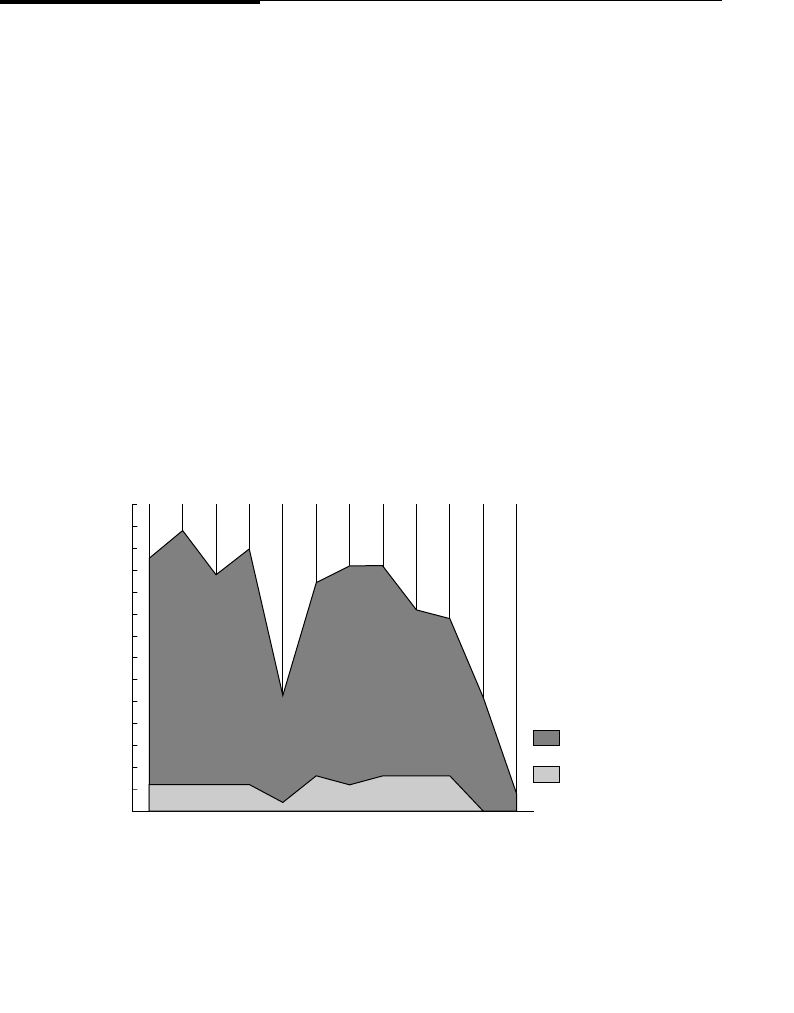

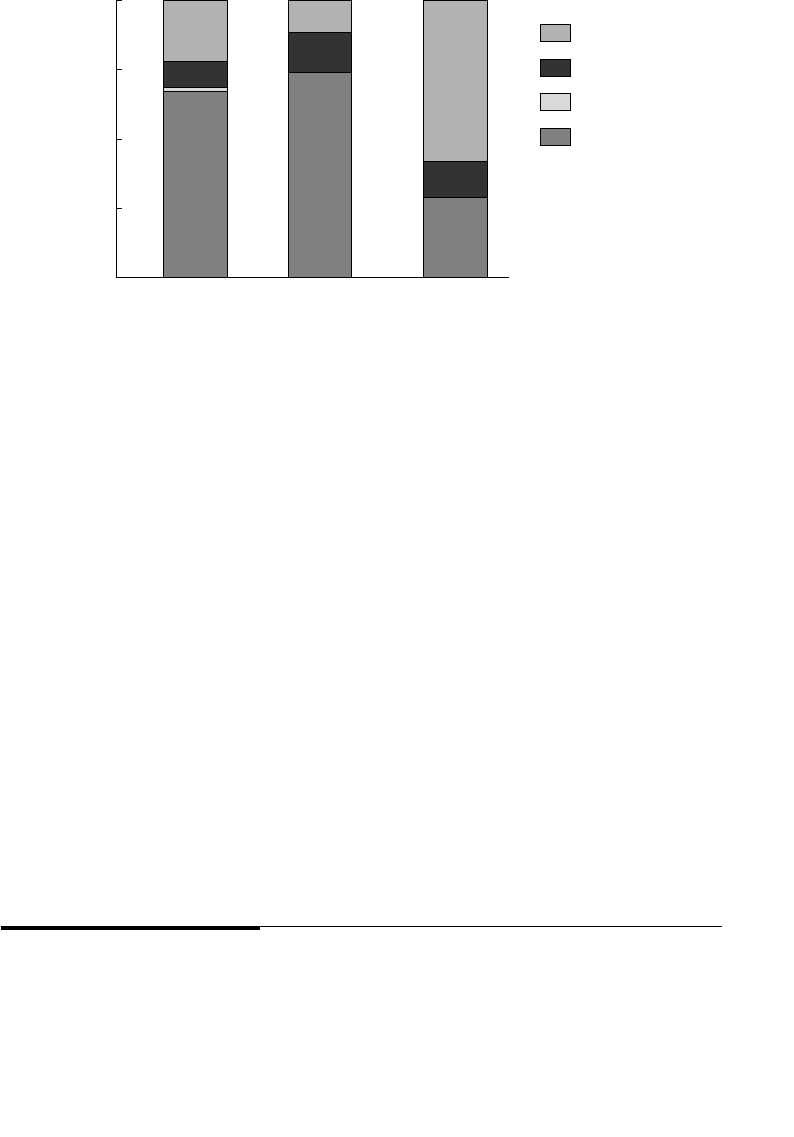

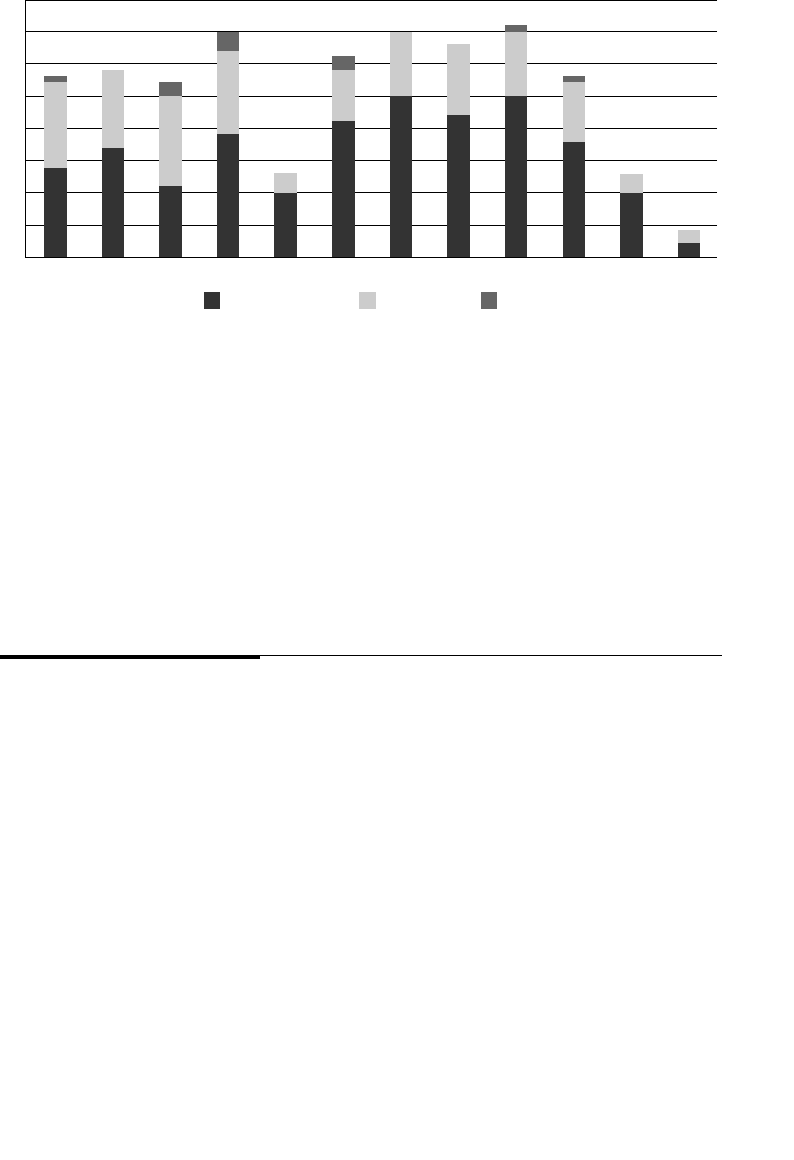

- Breakdown of Cases in the Insider Threat Database

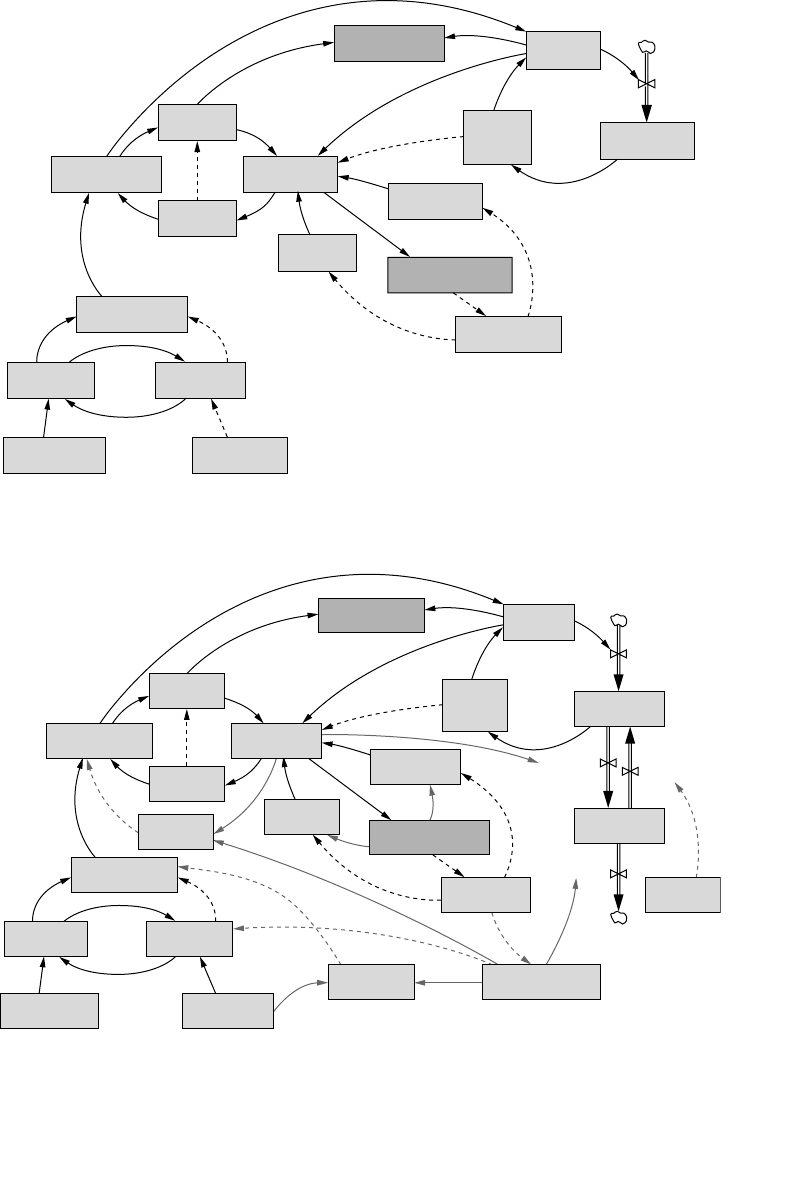

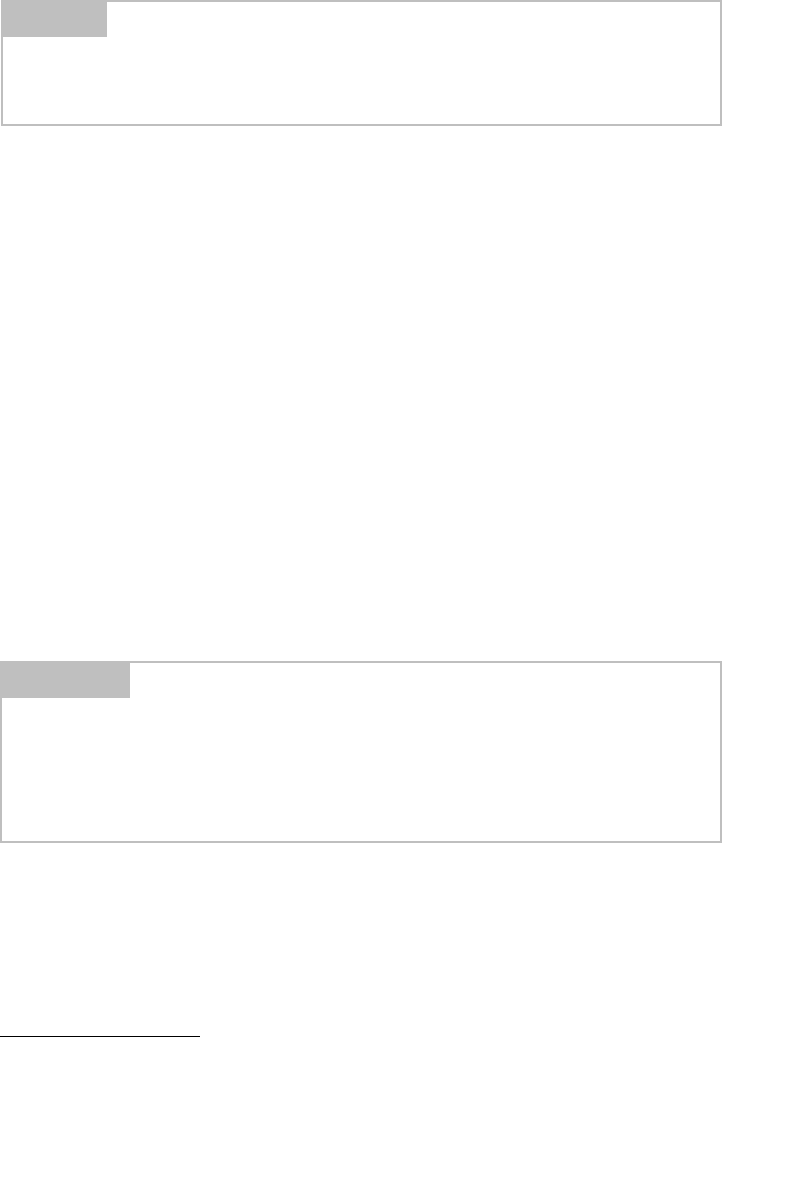

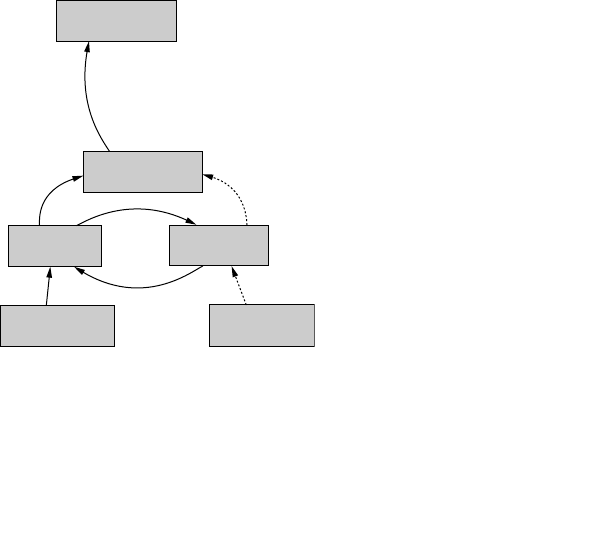

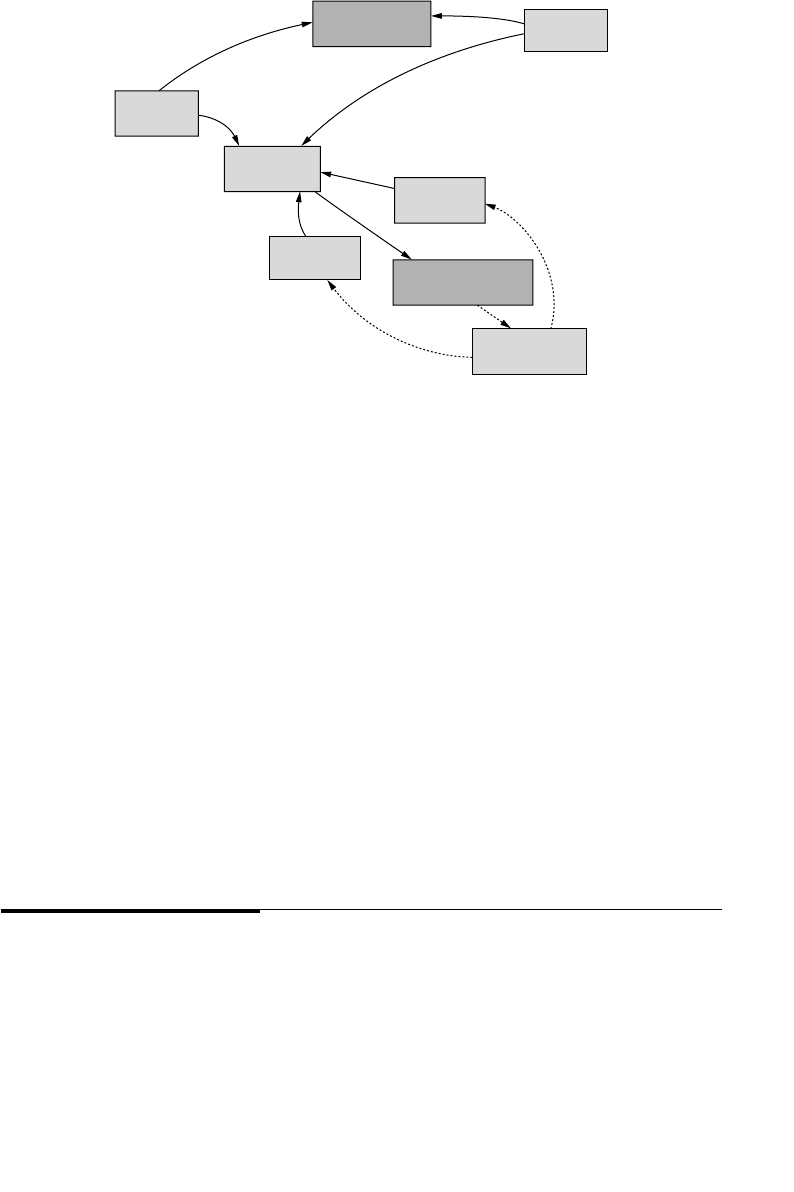

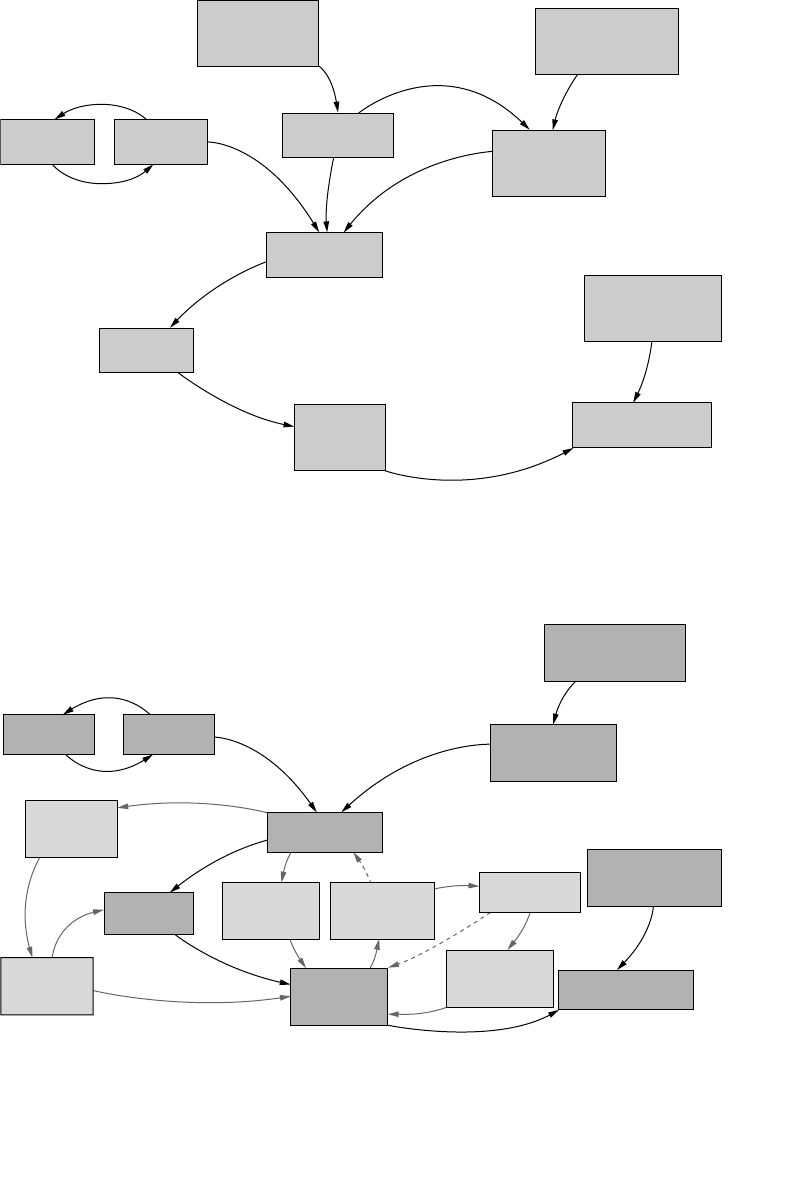

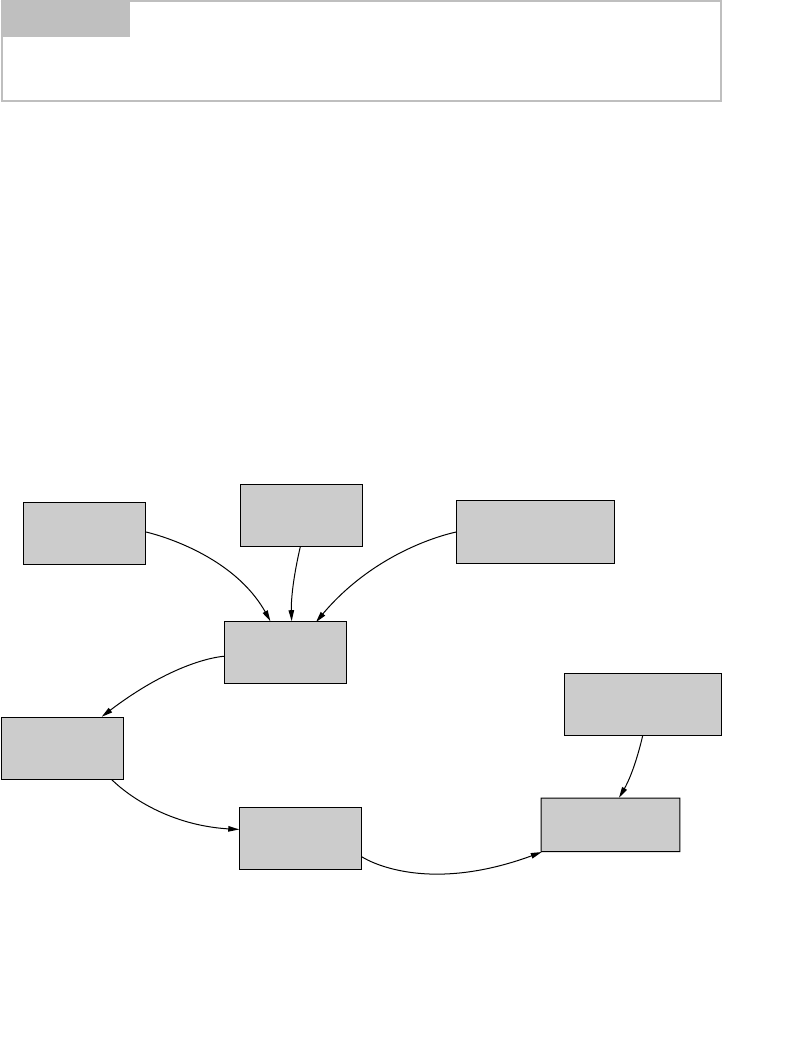

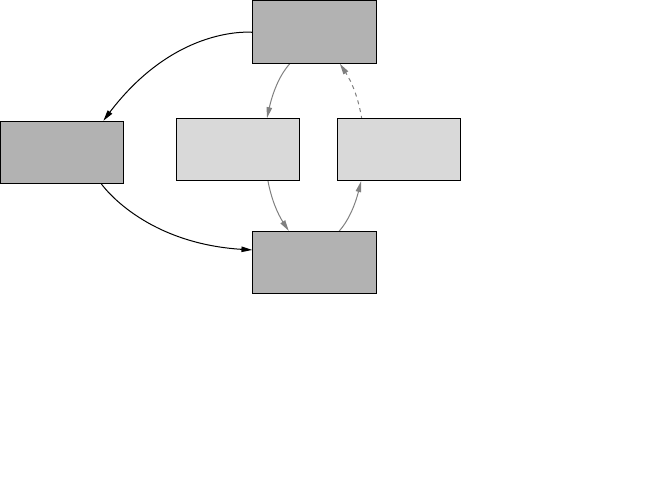

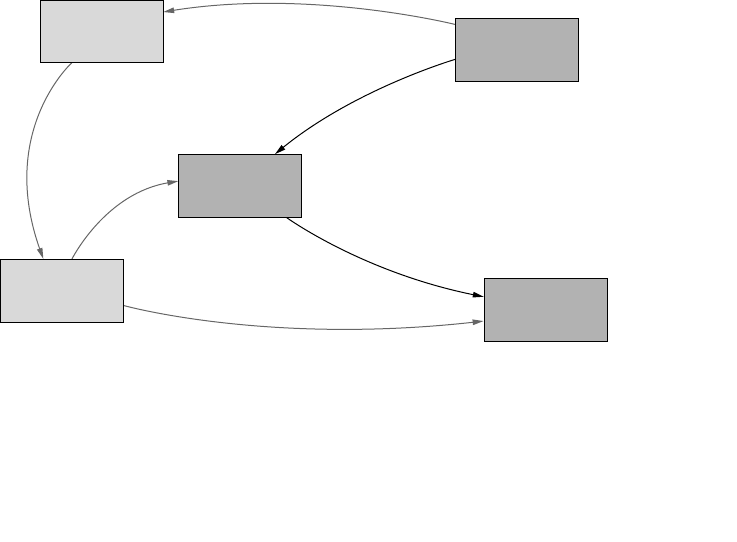

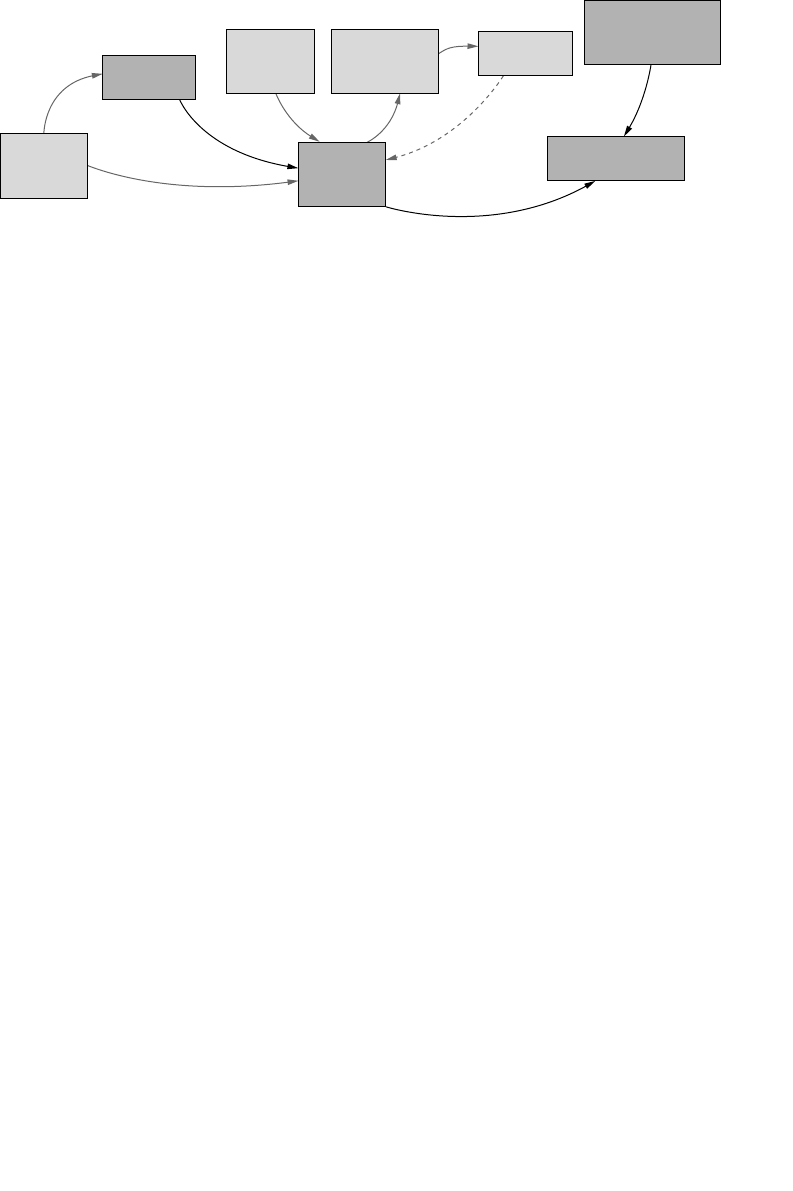

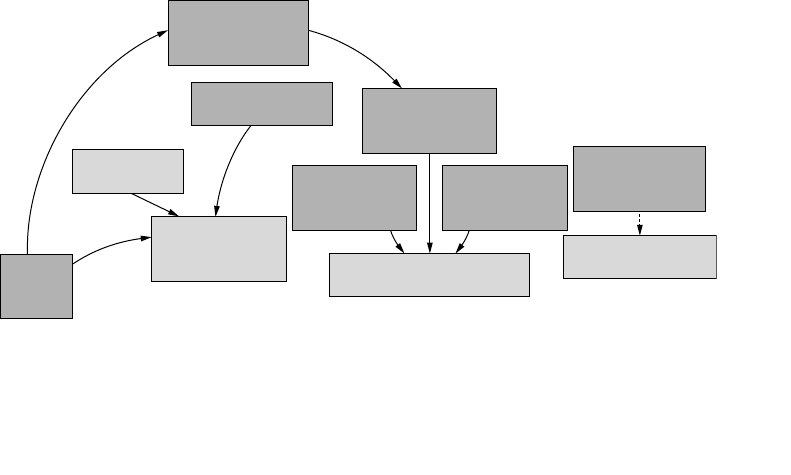

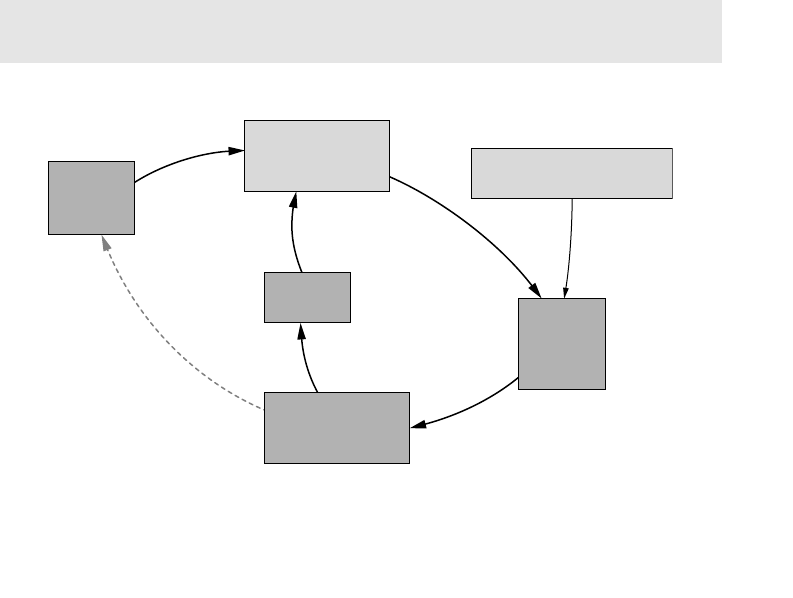

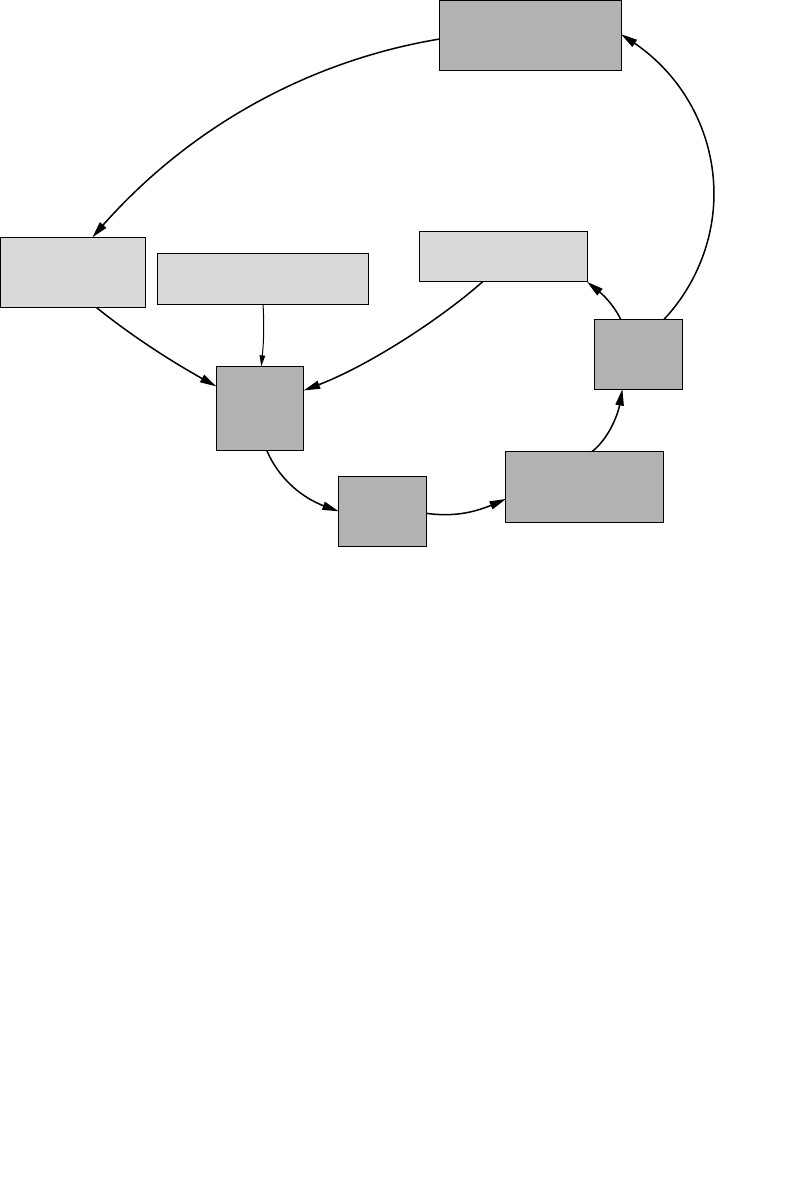

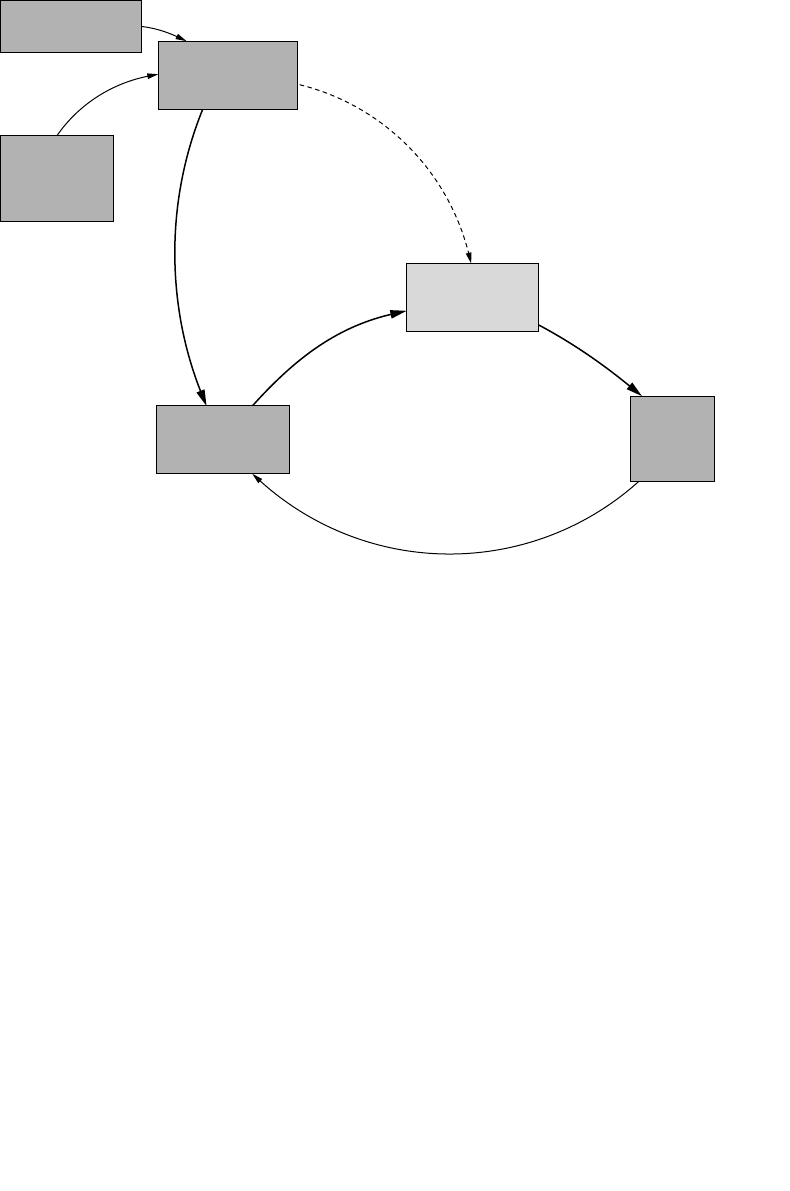

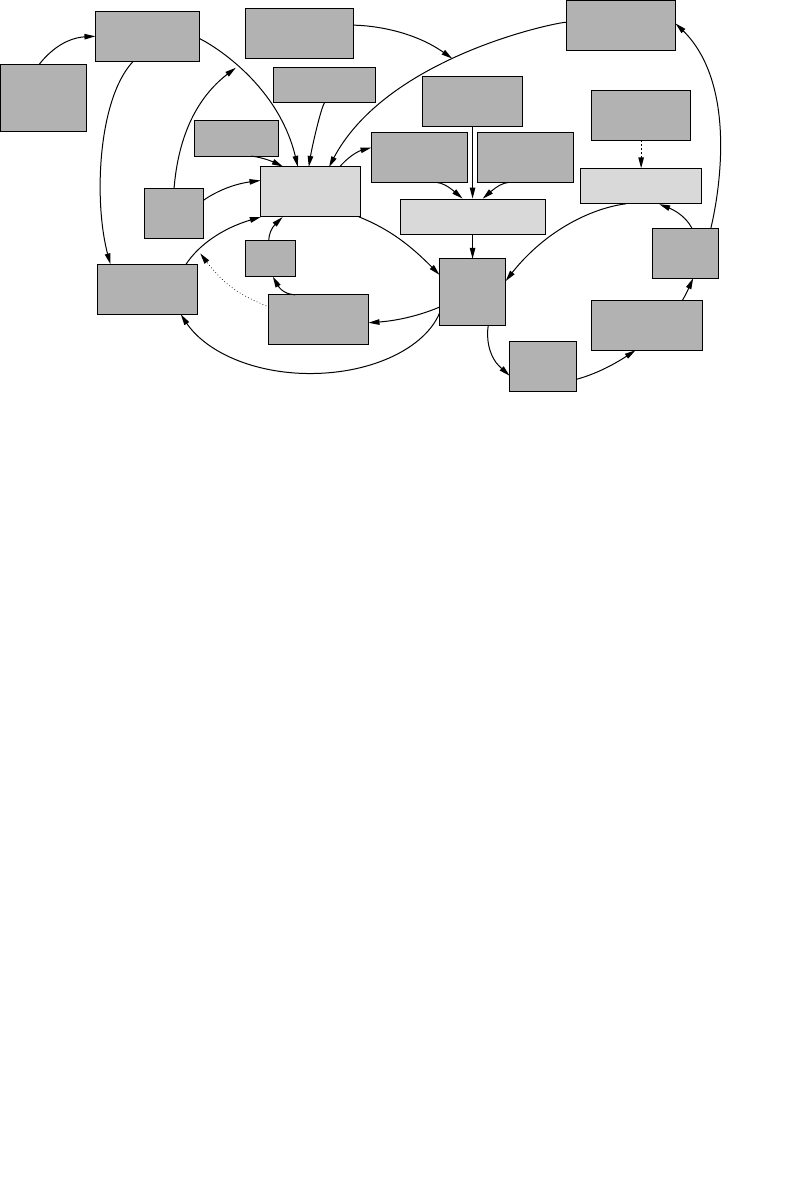

- CERT’s MERIT Models of Insider Threats

- Overview of the CERT Insider Threat Center

- Timeline of the CERT Program’s Insider Threat Work

- 2000 Initial Research

- 2001 Insider Threat Study

- 2001 Insider Threat Database

- 2005 Best Practices

- 2005 System Dynamics Models

- 2006 Workshops

- 2006 Interactive Virtual Simulation Tool

- 2007 Insider Threat Assessment

- 2009 Insider Threat Lab

- 2010 Insider Threat Exercises

- 2010 Insider Threat Study—Banking and Finance Sector

- Caveats about Our Work

- Summary

- Chapter 2. Insider IT Sabotage

- General Patterns in Insider IT Sabotage Crimes

- Mitigation Strategies

- Early Mitigation through Setting of Expectations

- Handling Disgruntlement through Positive Intervention

- Eliminating Unknown Access Paths

- More Complex Monitoring Strategies

- A Risk-Based Approach to Prioritizing Alerts

- Targeted Monitoring

- Measures upon Demotion or Termination

- Secure the Logs

- Test Backup and Recovery Process

- One Final Note of Caution

- Summary

- Chapter 3. Insider Theft of Intellectual Property

- Impacts

- General Patterns in Insider Theft of Intellectual Property Crimes

- The Entitled Independent

- The Ambitious Leader

- Theft of IP inside the United States Involving Foreign Governments or Organizations

- Mitigation Strategies for All Theft of Intellectual Property Cases

- Mitigation Strategies: Final Thoughts

- Summary

- Chapter 4. Insider Fraud

- Chapter 5. Insider Threat Issues in the Software Development Life Cycle

- Chapter 6. Best Practices for the Prevention and Detection of Insider Threats

- Summary of Practices

- Practice 1: Consider Threats from Insiders and Business Partners in Enterprise-Wide Risk Assessments

- Practice 2: Clearly Document and Consistently Enforce Policies and Controls

- Practice 3: Institute Periodic Security Awareness Training for All Employees

- Practice 4: Monitor and Respond to Suspicious or Disruptive Behavior, Beginning with the Hiring Process

- Practice 5: Anticipate and Manage Negative Workplace Issues

- Practice 6: Track and Secure the Physical Environment

- Practice 7: Implement Strict Password- and Account-Management Policies and Practices

- Practice 8: Enforce Separation of Duties and Least Privilege

- Practice 9: Consider Insider Threats in the Software Development Life Cycle

- Practice 10: Use Extra Caution with System Administrators and Technical or Privileged Users

- Practice 11: Implement System Change Controls

- Practice 12: Log, Monitor, and Audit Employee Online Actions

- Practice 13: Use Layered Defense against Remote Attacks

- Practice 14: Deactivate Computer Access Following Termination

- Practice 15: Implement Secure Backup and Recovery Processes

- Practice 16: Develop an Insider Incident Response Plan

- Summary

- References/Sources of Best Practices

- Chapter 7. Technical Insider Threat Controls

- Infrastructure of the Lab

- Demonstrational Videos

- High-Priority Mitigation Strategies

- Control 1: Use of Snort to Detect Exfiltration of Credentials Using IRC

- Control 2: Use of SiLK to Detect Exfiltration of Data Using VPN

- Control 3: Use of a SIEM Signature to Detect Potential Precursors to Insider IT Sabotage

- Control 4: Use of Centralized Logging to Detect Data Exfiltration during an Insider’s Last Days of Employment

- Insider Threat Exercises

- Summary

- Chapter 8. Case Examples

- Sabotage Cases

- Sabotage Case 1

- Sabotage Case 2

- Sabotage Case 3

- Sabotage Case 4

- Sabotage Case 5

- Sabotage Case 6

- Sabotage Case 7

- Sabotage Case 8

- Sabotage Case 9

- Sabotage Case 10

- Sabotage Case 11

- Sabotage Case 12

- Sabotage Case 13

- Sabotage Case 14

- Sabotage Case 15

- Sabotage Case 16

- Sabotage Case 17

- Sabotage Case 18

- Sabotage Case 19

- Sabotage Case 20

- Sabotage Case 21

- Sabotage Case 22

- Sabotage Case 23

- Sabotage Case 24

- Sabotage/Fraud Cases

- Theft of IP Cases

- Fraud Cases

- Miscellaneous Cases

- Summary

- Sabotage Cases

- Chapter 9. Conclusion and Miscellaneous Issues

- Insider Threat from Trusted Business Partners

- Overview of Insider Threats from Trusted Business Partners

- Fraud Committed by Trusted Business Partners

- IT Sabotage Committed by Trusted Business Partners

- Theft of Intellectual Property Committed by Trusted Business Partners

- Open Your Mind: Who Are Your Trusted Business Partners?

- Recommendations for Mitigation and Detection

- Malicious Insiders with Ties to the Internet Underground

- Final Summary

- Insider Threat from Trusted Business Partners

- Appendix A. Insider Threat Center Products and Services

- Appendix B. Deeper Dive into the Data

- Appendix C. CyberSecurity Watch Survey

- Appendix D. Insider Threat Database Structure

- Appendix E. Insider Threat Training Simulation: MERIT InterActive

- Appendix F. System Dynamics Background

- Glossary of Terms

- References

- About the Authors

- Index

ptg7481383

ptg7481383

The CERT® Guide to

Insider Threats

ptg7481383

The SEI Series in Software Engineering represents is a collaborative

undertaking of the Carnegie Mellon Software Engineering Institute (SEI) and

Addison-Wesley to develop and publish books on software engineering and

related topics. The common goal of the SEI and Addison-Wesley is to provide

the most current information on these topics in a form that is easily usable by

practitioners and students.

Books in the series describe frameworks, tools, methods, and technologies

designed to help organizations, teams, and individuals improve their technical

or management capabilities. Some books describe processes and practices for

developing higher-quality software, acquiring programs for complex systems, or

delivering services more effectively. Other books focus on software and system

architecture and product-line development. Still others, from the SEI’s CERT

Program, describe technologies and practices needed to manage software

and network security risk. These and all books in the series address critical

problems in software engineering for which practical solutions are available.

Visit informit.com/sei for a complete list of available products.

The SEI Series in

Software Engineering

ptg7481383

The CERT® Guide to

Insider Threats

How to Prevent, Detect, and Respond to

Information Technology Crimes

(Theft, Sabotage, Fraud)

Dawn Cappelli

Andrew Moore

Randall Trzeciak

Upper Saddle River, NJ • Boston• Indianapolis • San Francisco

New York • Toronto • Montreal • London • Munich • Paris • Madrid

Capetown • Sydney • Tokyo • Singapore • Mexico City

ptg7481383

The SEI Series in Software Engineering

Many of the designations used by manufacturers and sellers to distinguish their products are claimed as

trademarks. Where those designations appear in this book, and the publisher was aware of a trademark claim,

the designations have been printed with initial capital letters or in all capitals.

CMM, CMMI, Capability Maturity Model, Capability Maturity Modeling, Carnegie Mellon, CERT, and CERT

Coordination Center are registered in the U.S. Patent and Trademark Office by Carnegie Mellon University.

ATAM; Architecture Tradeoff Analysis Method; CMM Integration; COTS Usage-Risk Evaluation; CURE; EPIC;

Evolutionary Process for Integrating COTS Based Systems; Framework for Software Product Line Practice;

IDEAL; Interim Profile; OAR; OCTAVE; Operationally Critical Threat, Asset, and Vulnerability Evaluation;

Options Analysis for Reengineering; Personal Software Process; PLTP; Product Line Technical Probe; PSP;

SCAMPI; SCAMPI Lead Appraiser; SCAMPI Lead Assessor; SCE; SEI; SEPG; Team Software Process; and TSP

are service marks of Carnegie Mellon University.

Special permission to reproduce portions of Carnegie Mellon University copyrighted materials has been

granted by the Software Engineering Institute. (See page 388 for details.)

Many of the designations used by manufacturers and sellers to distinguish their products are claimed as

trademarks. Where those designations appear in this book, and the publisher was aware of a trademark claim,

the designations have been printed with initial capital letters or in all capitals.

The authors and publisher have taken care in the preparation of this book, but make no expressed or

implied warranty of any kind and assume no responsibility for errors or omissions. No liability is assumed

for incidental or consequential damages in connection with or arising out of the use of the information or

programs contained herein.

The publisher offers excellent discounts on this book when ordered in quantity for bulk purchases or special

sales, which may include electronic versions and/or custom covers and content particular to your business,

training goals, marketing focus, and branding interests. For more information, please contact: U.S. Corporate

and Government Sales, (800) 382-3419, corpsales@pearsontechgroup.com.

For sales outside the United States, please contact: International Sales, international@pearson.com.

Visit us on the Web: informit.com/aw

Cataloging-in-Publication Data is on file with the Library of Congress.

Copyright © 2012 Pearson Education, Inc.

All rights reserved. Printed in the United States of America. This publication is protected by copyright, and

permission must be obtained from the publisher prior to any prohibited reproduction, storage in a retrieval

system, or transmission in any form or by any means, electronic, mechanical, photocopying, recording, or

likewise. To obtain permission to use material from this work, please submit a written request to Pearson

Education, Inc., Permissions Department, One Lake Street, Upper Saddle River, New Jersey 07458, or you may

fax your request to (201) 236-3290.

ISBN-13: 978-0-321-81257-5

ISBN-10: 0-321-81257-3

Text printed in the United States on recycled paper at Courier in Westford, Massachusetts.

First printing, January 2012

ptg7481383

For Fred, Anthony, and Alyssa. You are my life—I love you!

—Dawn

For those who make my life oh so sweet: Susan, Eric, Susan’s

amazing family, and my own Mom, Dad, Roger, and Lisa.

—Andy

For Marianne, Abbie, Nate, and Luke. I am the luckiest person in

the world to have such a wonderful family.

—Randy

ptg7481383

This page intentionally left blank

ptg7481383

vii

Contents

Preface .......................................................................................................... xvii

Acknowledgments .................................................................................... xxxi

Chapter 1. Overview .........................................................................................1

True Stories of Insider Attacks ......................................................3

Insider IT Sabotage .......................................................................3

Insider Fraud ................................................................................4

Insider Theft of Intellectual Property ............................................5

The Expanding Complexity of Insider Threats ..........................6

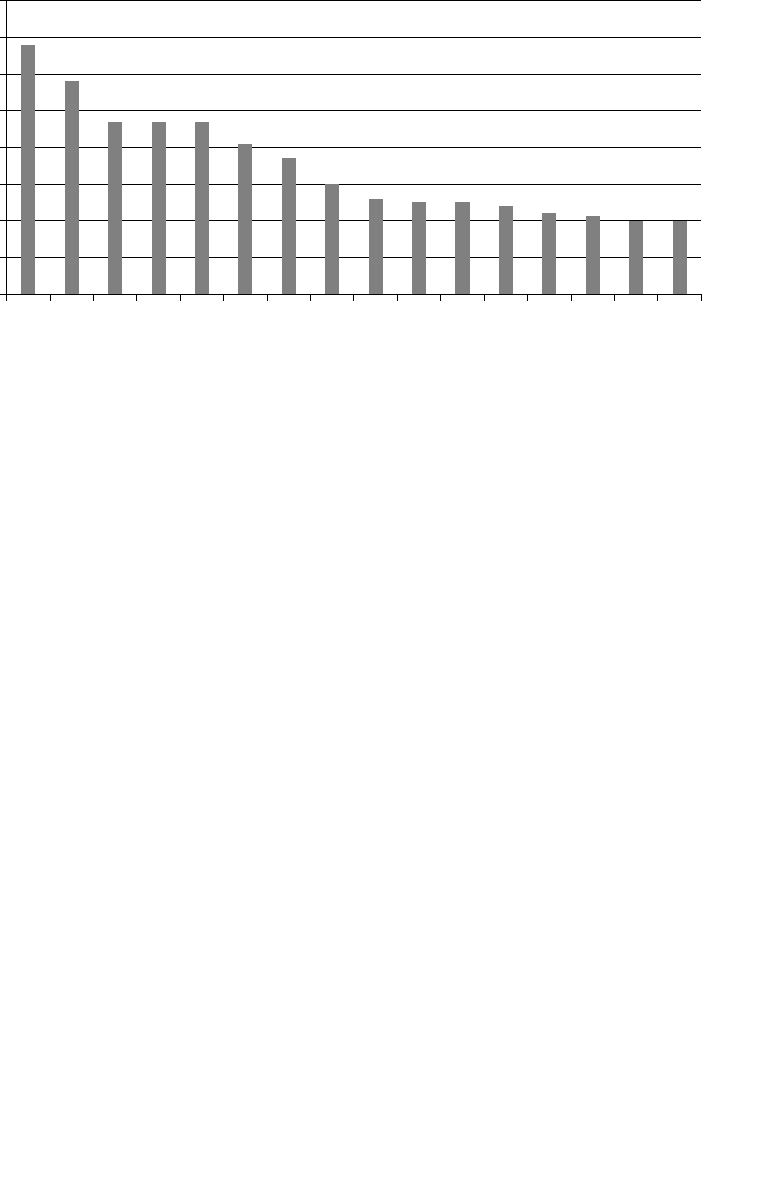

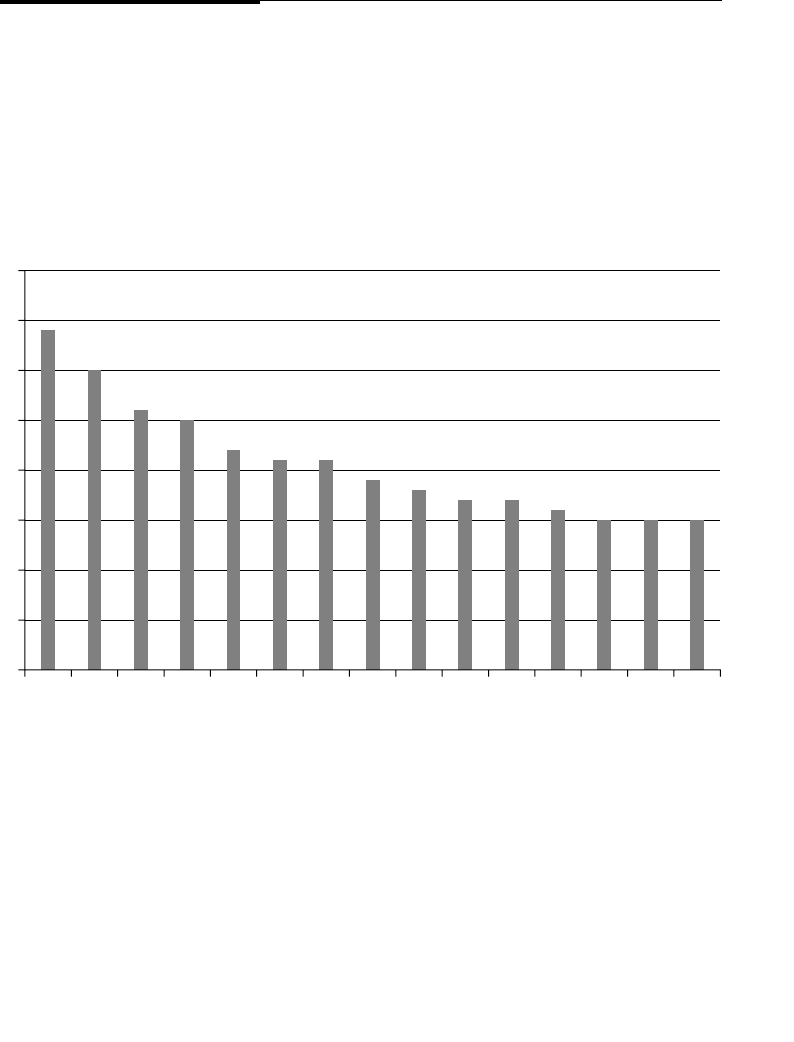

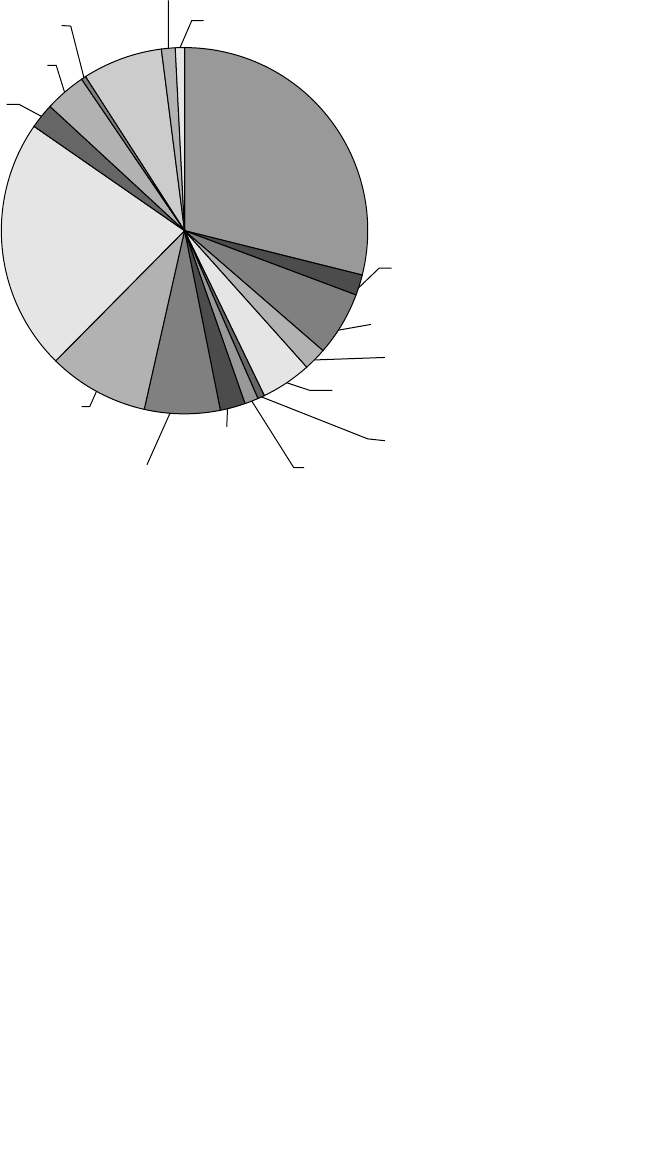

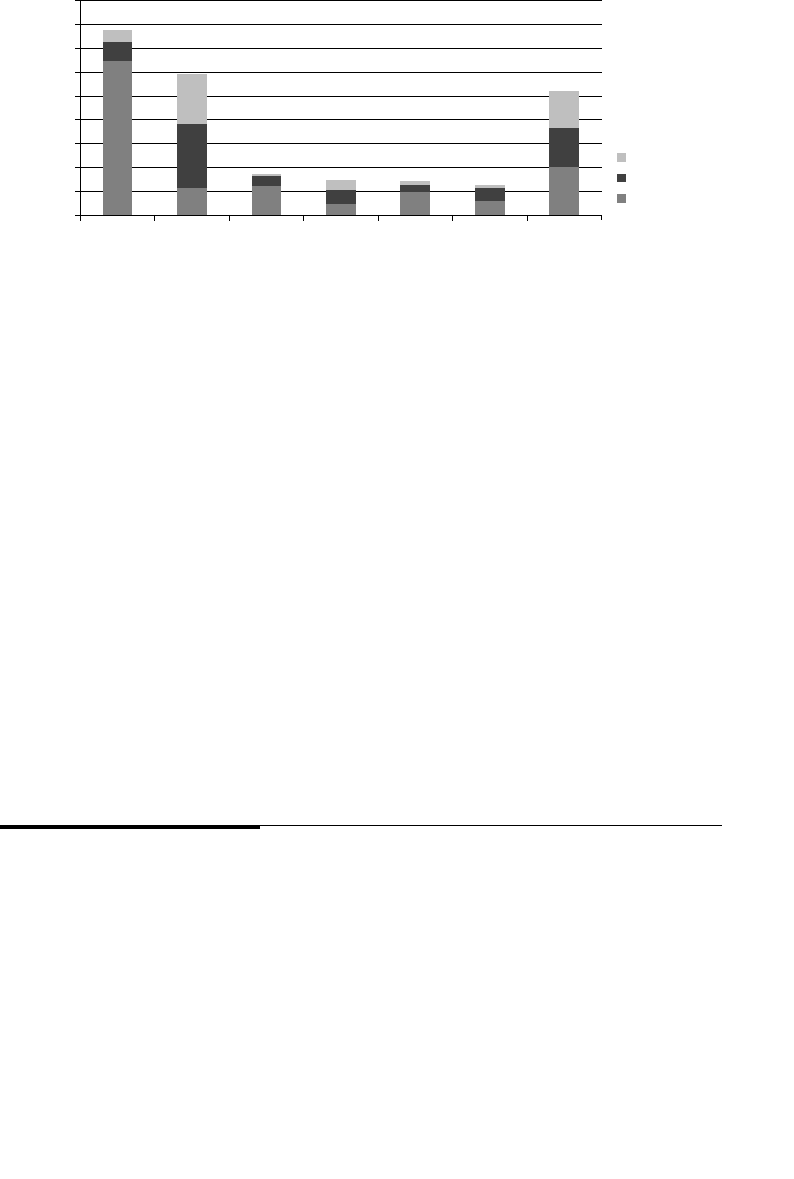

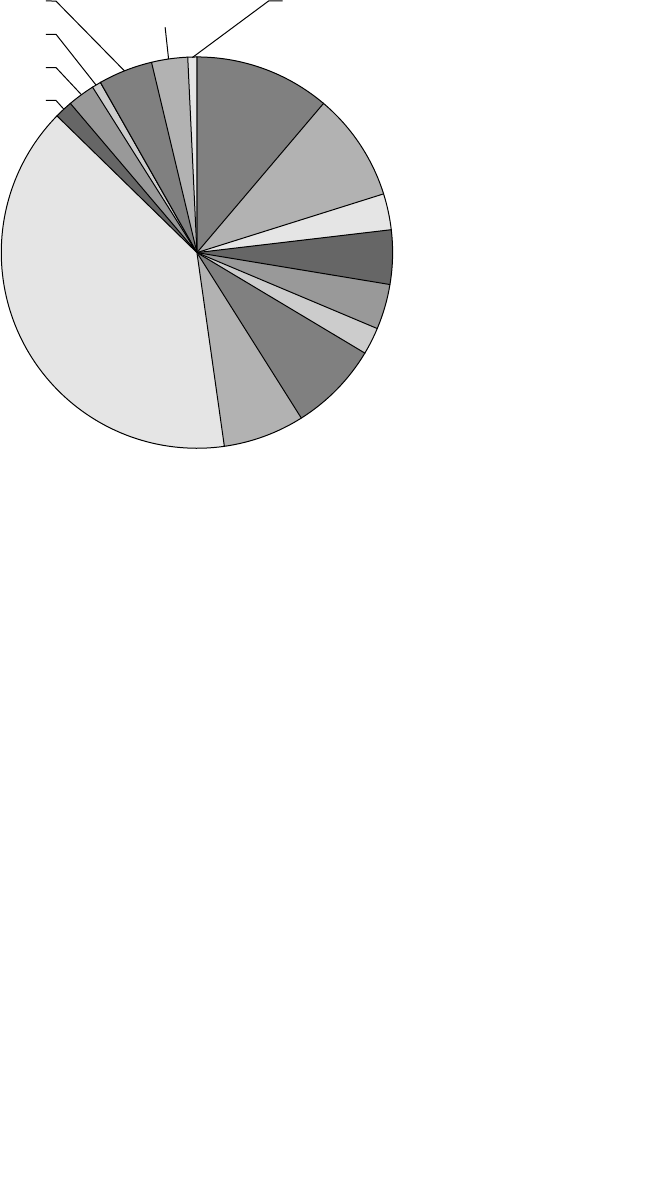

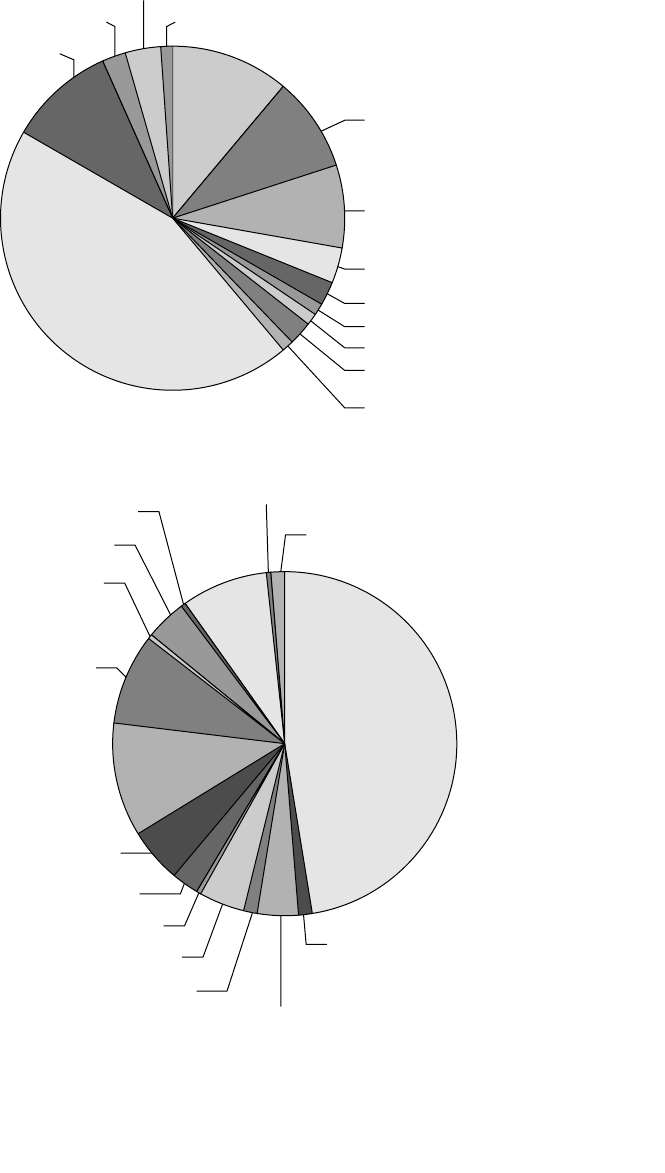

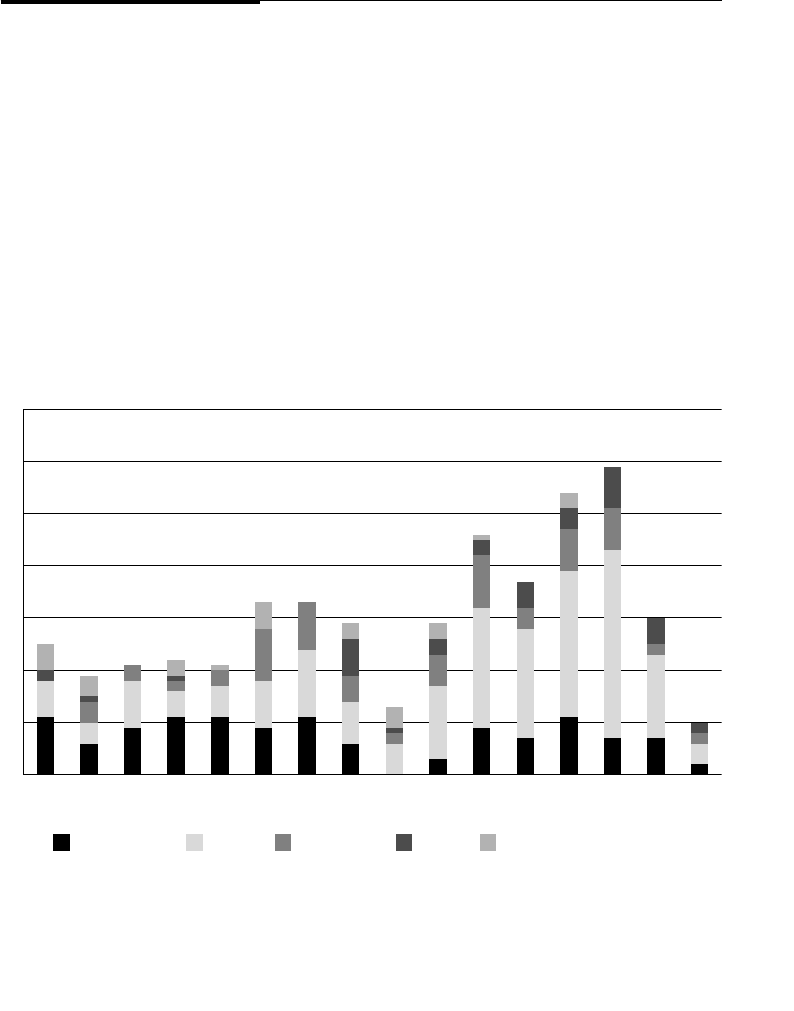

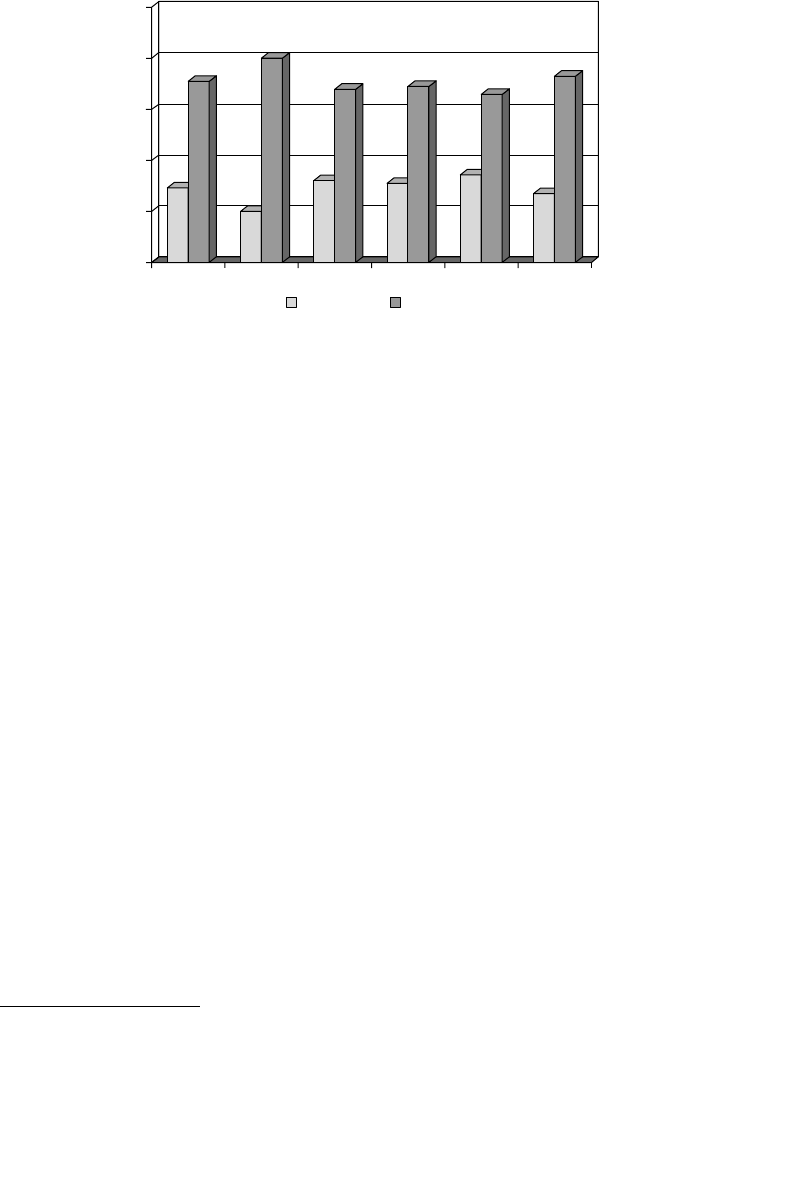

Breakdown of Cases in the Insider Threat Database .................7

CERT’s MERIT Models of Insider Threats ..................................9

Why Our Profiles Are Useful ......................................................10

Why Not Just One Profile? .........................................................11

Why Didn’t We Create a Single Insider Theft Model? ...............12

Overview of the CERT Insider Threat Center ...........................13

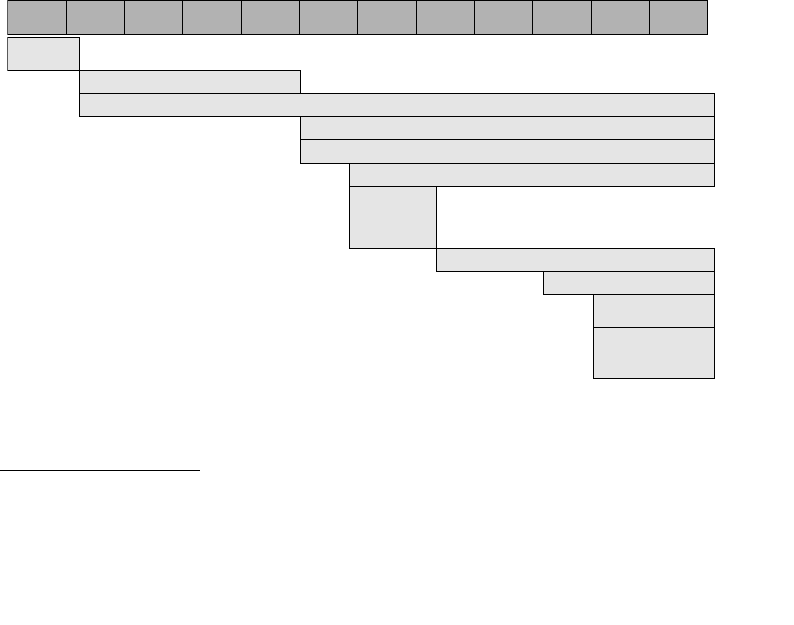

Timeline of the CERT Program’s Insider Threat Work ............16

2000 Initial Research ..................................................................16

2001 Insider Threat Study ..........................................................16

2001 Insider Threat Database .....................................................17

2005 Best Practices .....................................................................17

2005 System Dynamics Models ..................................................17

2006 Workshops ..........................................................................17

ptg7481383

viii Contents

2006 Interactive Virtual Simulation Tool ...................................18

2007 Insider Threat Assessment .................................................18

2009 Insider Threat Lab ..............................................................18

2010 Insider Threat Exercises .....................................................18

2010 Insider Threat Study—Banking and Finance Sector .........19

Caveats about Our Work .............................................................20

Summary .......................................................................................20

Chapter 2. Insider IT Sabotage .....................................................................23

General Patterns in Insider IT Sabotage Crimes ......................28

Personal Predispositions .............................................................28

Disgruntlement and Unmet Expectations ..................................31

Behavioral Precursors .................................................................35

Stressful Events ..........................................................................37

Tec hn ica l Pre cu rso rs an d Ac ces s Pa th s .......................................40

The Trust Trap .............................................................................45

Mitigation Strategies ....................................................................46

Early Mitigation through Setting of Expectations .....................47

Handling Disgruntlement through Positive Intervention .........49

Eliminating Unknown Access Paths ..........................................50

More Complex Monitoring Strategies ........................................52

A Risk-Based Approach to Prioritizing Alerts ............................53

Targe te d M oni to rin g ...................................................................55

Measures upon Demotion or Termination ..................................56

Secure the Logs ............................................................................56

Tes t Ba cku p an d Re cov er y P roc es s ..............................................57

One Final Note of Caution ..........................................................59

Summary .......................................................................................59

Chapter 3. Insider Theft of Intellectual Property ......................................61

Impacts ...........................................................................................66

General Patterns in Insider Theft of Intellectual

Property Crimes ............................................................................68

ptg7481383

ixContents

The Entitled Independent ............................................................69

Insider Contribution and Entitlement ........................................70

Insider Dissatisfaction ................................................................72

Insider Theft and Deception ........................................................74

The Ambitious Leader .................................................................78

Insider Planning of Theft ............................................................79

Increasing Access ........................................................................80

Organization’s Discovery of Theft ..............................................80

Theft of IP inside the United States Involving Foreign

Governments or Organizations ..................................................83

Who They Are .............................................................................85

What They Stole ..........................................................................86

Why They Stole ...........................................................................88

Mitigation Strategies for All Theft of Intellectual

Property Cases ..............................................................................88

Exfiltration Methods ...................................................................89

Network Data Exfiltration ..........................................................90

Host Data Exfiltration ................................................................93

Physical Exfiltration ...................................................................95

Exfiltration of Specific Types of IP ..............................................95

Concealment ...............................................................................95

Tru st ed Bu si nes s Pa rt ner s ..........................................................96

Mitigation Strategies: Final Thoughts .......................................97

Summary .......................................................................................98

Chapter 4. Insider Fraud ..............................................................................101

General Patterns in Insider Fraud Crimes ..............................106

Origins of Fraud .......................................................................108

Continuing the Fraud ...............................................................110

Outsider Facilitation ................................................................. 111

Recruiting Other Insiders into the Scheme ...............................113

Insider Stressors ........................................................................115

Insider Fraud Involving Organized Crime .............................115

ptg7481383

xContents

Snapshot of Malicious Insiders Involved with

Organized Crime.......................................................................116

Who They Are ...........................................................................117

Why They Strike .......................................................................118

What They Strike ......................................................................118

How They Strike .......................................................................118

Organizational Issues of Concern and Potential

Countermeasures ........................................................................120

Inadequate Auditing of Critical and Irregular Processes ..........120

Employee/Coworker Susceptibility to Recruitment ..................121

Verification of Modification of Critical Data .............................123

Financial Problems ...................................................................124

Excessive Access Privilege ........................................................125

Other Issues of Concern ............................................................125

Mitigation Strategies: Final Thoughts .....................................126

Summary .....................................................................................127

Chapter 5. Insider Threat Issues in the Software

Development Life Cycle ...........................................................129

Requirements and System Design Oversights .......................131

Authentication and Role-Based Access Control .......................132

Separation of Duties ..................................................................133

Automated Data Integrity Checks ............................................134

Exception Handling ..................................................................135

System Implementation, Deployment, and Maintenance

Issues ............................................................................................136

Code Reviews ............................................................................136

Attribution ................................................................................137

System Deployment ..................................................................137

Backups .....................................................................................139

Programming Techniques Used As an Insider

Attack Tool ...................................................................................139

Modification of Production Source Code or Scripts ..................140

Obtaining Unauthorized Authentication Credentials ..............141

Disruption of Service and/or Theft of Information ...................141

ptg7481383

xiContents

Mitigation Strategies ..................................................................142

Summary .....................................................................................143

Chapter 6. Best Practices for the Prevention and Detection

of Insider Threats ......................................................................145

Summary of Practices.................................................................146

Practice 1: Consider Threats from Insiders

and Business Partners in Enterprise-Wide

Risk Assessments ........................................................................151

What Can You Do? ...................................................................151

Case Studies: What Could Happen if I Don’t Do It? ................152

Practice 2: Clearly Document and Consistently

Enforce Policies and Controls ...................................................155

What Can You Do? ...................................................................155

Case Studies: What Could Happen if I Don’t Do It? ................156

Practice 3: Institute Periodic Security Awareness

Training for All Employees .......................................................159

What Can You Do? ...................................................................159

Case Studies: What Could Happen if I Don’t Do It? ................162

Practice 4: Monitor and Respond to Suspicious

or Disruptive Behavior, Beginning

with the Hiring Process .............................................................164

What Can You Do? ...................................................................164

Case Studies: What Could Happen if I Don’t Do It? ................166

Practice 5: Anticipate and Manage Negative

Workplace Issues ........................................................................168

What Can You Do? ...................................................................168

Case Studies: What Could Happen if I Don’t Do It? ................169

Practice 6: Track and Secure the Physical Environment ........171

What Can You Do? ...................................................................171

Case Studies: What Could Happen if I Don’t Do It? ................173

Practice 7: Implement Strict Password- and Account-

Management Policies and Practices .........................................174

What Can You Do? ...................................................................174

Case Studies: What Could Happen if I Don’t Do It? ................176

ptg7481383

xii Contents

Practice 8: Enforce Separation of Duties and

Least Privilege .............................................................................178

What Can You Do? ...................................................................178

Case Studies: What Could Happen if I Don’t Do It? ................180

Practice 9: Consider Insider Threats in the Software

Development Life Cycle ............................................................182

What Can You Do? ...................................................................182

Requirements Definition ...........................................................182

System Design ..........................................................................183

Implementation .........................................................................183

Installation ................................................................................184

System Maintenance .................................................................185

Case Studies: What Could Happen if I Don’t Do It? ................185

Practice 10: Use Extra Caution with System

Administrators and Technical or Privileged Users ................187

What Can You Do? ...................................................................187

Case Studies: What Could Happen if I Don’t Do It? ................189

Practice 11: Implement System Change Controls ...................191

What Can You Do? ...................................................................191

Case Studies: What Could Happen if I Don’t Do It? ................192

Practice 12: Log, Monitor, and Audit Employee

Online Actions ............................................................................195

What Can You Do? ...................................................................195

Case Studies: What Could Happen if I Don’t Do It? ................198

Practice 13: Use Layered Defense against Remote Attacks ...200

What Can You Do? ...................................................................200

Case Studies: What Could Happen if I Don’t Do It? ................201

Practice 14: Deactivate Computer Access Following

Termination .................................................................................203

What Can You Do? ...................................................................203

Case Studies: What Could Happen if I Don’t Do It? ................205

Practice 15: Implement Secure Backup and Recovery

Processes ......................................................................................207

What Can You Do? ...................................................................207

Case Studies: What Could Happen if I Don’t Do It? ................209

ptg7481383

xiiiContents

Practice 16: Develop an Insider Incident Response Plan.......211

What Can You Do? ...................................................................211

Case Studies: What Could Happen if I Don’t Do It? ................212

Summary .....................................................................................213

References/Sources of Best Practices .......................................214

Chapter 7. Tec hn ica l In sid er Th re at Co nt rol s ..........................................215

Infrastructure of the Lab ............................................................217

Demonstrational Videos ............................................................218

High-Priority Mitigation Strategies .........................................219

Control 1: Use of Snort to Detect Exfiltration of

Credentials Using IRC ...............................................................220

Suggested Solution ...................................................................221

Control 2: Use of SiLK to Detect Exfiltration of Data

Using VPN ...................................................................................221

Suggested Solution ...................................................................222

Control 3: Use of a SIEM Signature to Detect Potential

Precursors to Insider IT Sabotage .............................................223

Suggested Solution ...................................................................224

Database Analysis .....................................................................225

SIEM Signature ........................................................................227

Common Event Format .............................................................228

Common Event Expression .......................................................229

Applying the Signature.............................................................230

Conclusion ................................................................................231

Control 4: Use of Centralized Logging to Detect Data

Exfiltration during an Insider’s Last Days

of Employment ...........................................................................231

Suggested Solution ...................................................................232

Monitoring Considerations Surrounding Termination ............233

An Example Implementation Using Splunk .............................235

Advanced Targeting and Automation .......................................237

Conclusion ................................................................................239

ptg7481383

xiv Contents

Insider Threat Exercises .............................................................239

Summary .....................................................................................239

Chapter 8. Case Examples ............................................................................241

Sabotage Cases ............................................................................241

Sabotage Case 1 .........................................................................243

Sabotage Case 2 .........................................................................244

Sabotage Case 3 .........................................................................244

Sabotage Case 4 .........................................................................245

Sabotage Case 5 .........................................................................245

Sabotage Case 6 .........................................................................246

Sabotage Case 7 .........................................................................246

Sabotage Case 8 .........................................................................247

Sabotage Case 9 .........................................................................247

Sabotage Case 10 .......................................................................248

Sabotage Case 11 .......................................................................248

Sabotage Case 12 .......................................................................249

Sabotage Case 13 .......................................................................249

Sabotage Case 14 .......................................................................250

Sabotage Case 15 .......................................................................250

Sabotage Case 16 .......................................................................251

Sabotage Case 17 .......................................................................252

Sabotage Case 18 .......................................................................252

Sabotage Case 19 .......................................................................253

Sabotage Case 20 .......................................................................253

Sabotage Case 21 .......................................................................254

Sabotage Case 22 .......................................................................255

Sabotage Case 23 .......................................................................255

Sabotage Case 24 .......................................................................256

Sabotage/Fraud Cases ...............................................................256

Sabotage/Fraud Case 1 ..............................................................257

Sabotage/Fraud Case 2 ..............................................................257

Sabotage/Fraud Case 3 ..............................................................258

ptg7481383

xvContents

Theft of IP Cases .........................................................................258

Theft of IP Case 1 ......................................................................259

Theft of IP Case 2 ......................................................................260

Theft of IP Case 3 ......................................................................260

Theft of IP Case 4 ......................................................................261

Theft of IP Case 5 ......................................................................261

Theft of IP Case 6 ......................................................................262

Fraud Cases .................................................................................262

Fraud Case 1 .............................................................................264

Fraud Case 2 .............................................................................264

Fraud Case 3 .............................................................................265

Fraud Case 4 .............................................................................265

Fraud Case 5 .............................................................................266

Fraud Case 6 .............................................................................266

Fraud Case 7 .............................................................................266

Fraud Case 8 .............................................................................267

Fraud Case 9 .............................................................................267

Fraud Case 10 ...........................................................................268

Fraud Case 11 ............................................................................268

Fraud Case 12 ...........................................................................269

Miscellaneous Cases ...................................................................269

Miscellaneous Case 1 ................................................................270

Miscellaneous Case 2 ................................................................271

Miscellaneous Case 3 ................................................................271

Miscellaneous Case 4 ................................................................271

Miscellaneous Case 5 ................................................................272

Miscellaneous Case 6 ................................................................272

Summary .....................................................................................273

Chapter 9. Conclusion and Miscellaneous Issues ...................................275

Insider Threat from Trusted Business Partners ......................275

Overview of Insider Threats from Trusted

Business Partners .....................................................................278

Fraud Committed by Trusted Business Partners ......................279

ptg7481383

xvi Contents

IT Sabotage Committed by Trusted Business Partners .............280

Theft of Intellectual Property Committed by Trusted

Business Partners .....................................................................281

Open Your Mind: Who Are Your Trusted

Business Partners? ...................................................................282

Recommendations for Mitigation and Detection ......................283

Malicious Insiders with Ties to the Internet

Underground ..............................................................................286

Snapshot of Malicious Insiders with Ties to the Internet

Underground ............................................................................287

Range of Involvement of the Internet Underground .................288

The Crimes ................................................................................288

Use of Unknown Access Paths Following Termination ............289

Insufficient Access Controls and Monitoring ...........................291

Conclusions: Insider Threats Involving the Internet

Underground ............................................................................293

Final Summary ............................................................................293

Let’s End on a Positive Note! ....................................................296

Appendix A. Insider Threat Center Products and Services...................299

Appendix B. Deeper Dive into the Data ...................................................307

Appendix C. CyberSecurity Watch Survey ..............................................319

Appendix D. Insider Threat Database Structure .....................................325

Appendix E. Insider Threat Training Simulation:

MERIT InterActive ................................................................333

Appendix F. System Dynamics Background ...........................................345

Glossary of Terms ............................................................................................351

References .........................................................................................................359

About the Authors...........................................................................................365

Index ..................................................................................................................369

ptg7481383

xvii

Preface

A night-shift security guard at a hospital plants malware1 on the hospital’s

computers. The malware could have brought down the heating, ventila-

tion, and cooling systems and ultimately cost lives. Fortunately, he has

posted a video of his crime on YouTube and is caught before carrying out

his illicit intent.

A programmer quits his job at a nuclear power plant in the United States

and returns to his home country of Iran with simulation software contain-

ing schematics and other engineering information for the power plant.

A group of employees at a Department of Motor Vehicles work together to

make some extra money by creating driver’s licenses for undocumented

immigrants and others who could not legally get a license. They are finally

arrested after creating a license for an undercover agent who claimed to be

on the “No Fly List.”

These insider incidents are the types of crimes we will discuss in this

book—crimes committed by current or former employees, contractors, or

business partners of the victim organization. As you will see, consequences

of malicious insider incidents can be substantial, including financial losses,

operational impacts, damage to reputation, and harm to individuals. The

actions of a single insider have caused damage to organizations ranging

from a few lost staff hours to negative publicity and financial damage so

extensive that businesses have been forced to lay off employees and even

close operations. Furthermore, insider incidents can have repercussions

beyond the victim organization, disrupting operations or services critical

to a specific sector or creating serious risks to public safety and national

security.

1. Malware: code intended to execute a malicious function; also commonly referred to as malicious

code. [Note: The first time any word from the Glossary is used in the book it will be printed in boldface.]

ptg7481383

xviii Preface

We use many actual case examples throughout the book. It is important

that you consider each case example by asking yourself the following ques-

tions: Could this happen in my organization? Could a night-shift security

guard plant malicious code on our computers? Do we have employees,

contractors, or business partners who might steal our sensitive information

and give it to a competitor or foreign government or organization? Do we

have systems that our employees could be paid by outsiders to manipulate?

For most of you, the answer to at least one of those questions will be an

unequivocal yes! The good news is that after more than ten years of research

into these types of crimes, we have developed insights and mitigation

strategies that you can put in place in your organization to increase your

chances of avoiding or surviving these types of situations.

Insider threats are an intriguing and complex problem. Some assert that

they are the most significant threat faced by organizations today. High-

profile insider threat cases, such as those conducted by people who stole

and passed proprietary and classified information to WikiLeaks, certainly

support that assertion, and demonstrate the danger posed by insiders in

both government and private industry.2

Unfortunately, insider threats cannot be mitigated solely through hard-

ware and software solutions. There is no “silver bullet” for stopping insider

threats. Furthermore, malicious insiders go to work every day and bypass

both physical and electronic security measures. They have legitimate,

authorized access to your most confidential, valuable information and sys-

tems, and they can use that legitimate access to perform criminal activity.

You have to trust them; it is not practical to watch everything each of your

employees does every day. The key to successfully mitigating these threats

is to turn those advantages for the malicious insiders into advantages for

you. This book will help you to do just that.

In 2001, shortly before September 11, the Secret Service sponsored the

Insider Threat Study, a joint project conducted by the Secret Service and

the Software Engineering Institute CERT Program at Carnegie Mellon

University. We never dreamed when we started that study that it would

have such far-reaching impacts, and that we would become so passionate

about the subject that we would end up devoting more than a decade (to

date!) of our careers to the problem.

2. For information regarding the WikiLeaks insider threat cases, see http://en.wikipedia.org/wiki/

Wikileaks.

ptg7481383

xixPreface

When we started our work on the insider threat problem, very little was

known about insider attacks: Who commits them, why do they do it, when

and where do they do it, and how do they set up and carry out their crimes?

After delving deep into the issue, we are happy to say that we now know

the answers to those questions. In addition, we have come a long way in

designing mitigation strategies for preventing, detecting, and responding

to those threats.

We have the largest collection of detailed insider threat case files that we

know of in the world. At the time of this publication, we had more than

700 cases, and that number grows weekly. We’ve had the opportunity to

interview many of the victims of these crimes, giving us a unique chance to

find out from supervisors and coworkers how the insider behaved at work,

what precipitating events occurred, what technical controls were in place

at the time, what policies and procedures were in place but not followed,

and so on. We’ve also had the unique opportunity to actually interview

convicted insiders and ask them probing questions about what made them

do it, what might have made them change their mind, and what technical

measures should have been in place to prevent this from happening.

We have a comprehensive database—the CERT insider threat database—

where we track the technical, behavioral, and organizational details of every

crime. We have combined our technical expertise in the CERT Insider Threat

Center with psychological expertise from federal law enforcement, the U.S.

Department of Defense (DOD), and our own independent consultants to

ensure that we consider the “big picture” of the problem, not just the techni-

cal details. We have created “crime models” or “crime profiles” that describe

the patterns in the crimes so that you can recognize an escalating insider

threat problem in your own organization. We have created an insider threat

lab where we are developing new technical solutions based on our mod-

els. We created an insider threat vulnerability assessment based on all of the

cases in the CERT database so that you can learn from past mistakes and not

suffer the same consequences as previous victim organizations. We publish

best practices for mitigating insider threats, hold workshops, and conduct

technical exercises for incident responders. Finally, we continue to collect

new cases of malicious insider compromises to track the changing face of

the threat.

We have been publishing our work for the past ten years; now we’ve

decided that for the tenth anniversary of the start of our work, it is appro-

priate to pull all of our most current information into a book. This book

provides a comprehensive reference for our entire body of knowledge on

insider threats.

ptg7481383

xx Preface

Scope of the Book: What Is and Is Not Included

Let’s begin by defining what we mean by malicious insider threats:

A malicious insider threat is a current or former employee, contractor, or

business partner who has or had authorized access to an organization’s

network, system, or data and intentionally exceeded or misused that

access in a manner that negatively affected the confidentiality, integrity, or

availability of the organization’s information or information systems.

There are a few important items to note. First of all, malicious insider

threats are not only employees.3 We chose to include contractors in our

definition because contractors often are granted authorized access to their

clients’ information, systems, and networks, and the nontechnical controls

for contractors are often much more lax than for employees. Interestingly,

we did not include business partners in our original definition of insider

threats in 2001. However, over time we found that more and more crimes

involved not employees or contractors, but trusted business partners who

had authorized access to the organization’s systems, networks, or informa-

tion. We encountered cases involving outsourcing, offshoring, and, more

recently, cloud computing. These cases raise complex insider threat risks

that should not be overlooked; therefore, we decided to add business part-

ners to our definition.

Second, note that malicious insider attacks do not only come from current

employees. In fact, one particular type of crime, insider IT sabotage, is more

often committed by former employees than current employees.

Now that we have explained whom we will discuss in the book, let’s focus

on what types of crimes we will examine. Before we describe the types of

crimes, it is important that you understand why we categorized them the

way we have. Much of the success in our work is due to the identification

of patterns found in the insider threat cases. These patterns describe the

“story” behind the cases. Who commits these crimes? Why? Are there signs

that they might commit a crime beforehand, so-called observable behaviors,

in the workplace? When do they do it, where, and do they do it alone or

with others?

The important thing to remember is that the patterns are different for each

type of crime. There is not one single pattern for insider threats in general.

3. Henceforth, for simplicity, reference to insider threats specifically means malicious insider threats

unless otherwise specified.

ptg7481383

xxiPreface

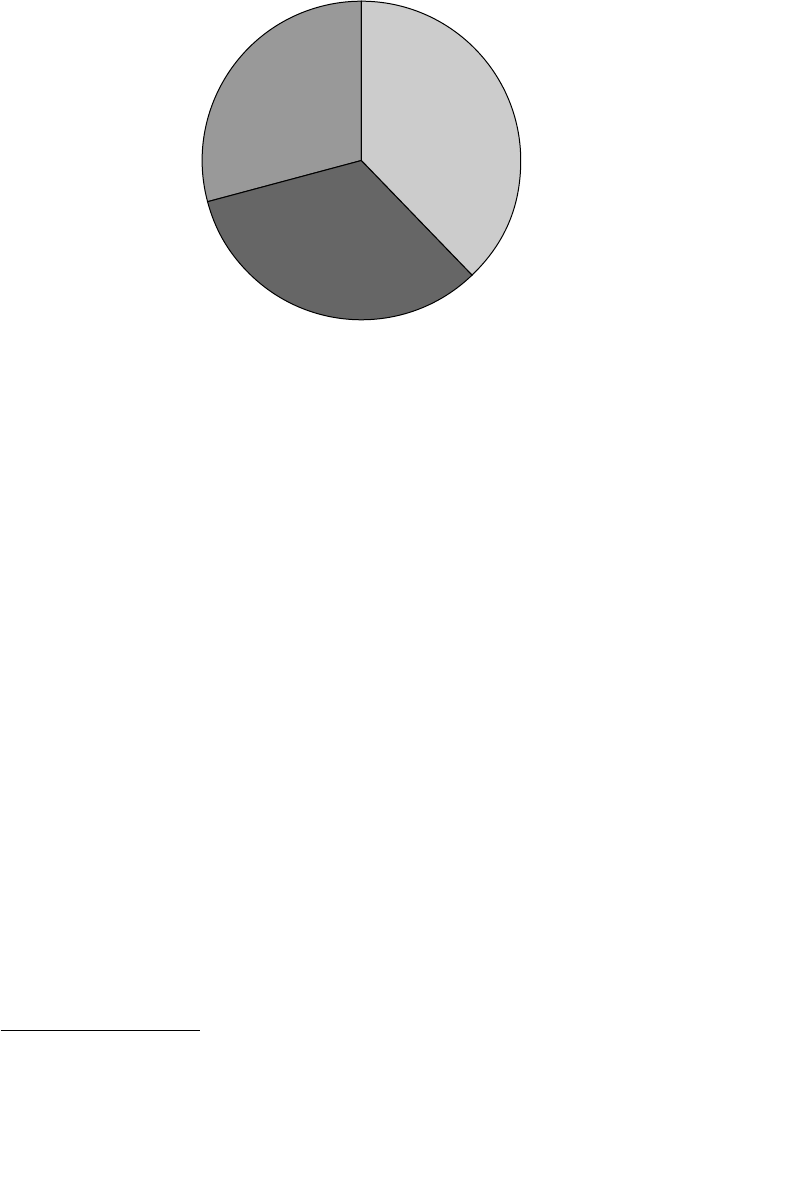

Instead, we have identified three models, or profiles, for insider threats.

Those three types of crimes are as follows.

• IT sabotage: An insider’s use of information technology (IT) to direct

specific harm at an organization or an individual.

• Theft of intellectual property (IP): An insider’s use of IT to steal

intellectual property from the organization. This category includes

industrial espionage involving insiders.

• Fraud: An insider’s use of IT for the unauthorized modification, addi-

tion, or deletion of an organization’s data (not programs or systems)

for personal gain, or theft of information that leads to an identity crime

(e.g., identity theft, credit card fraud).

Note that this book does not specifically describe national security

espionage crimes: the act of obtaining, delivering, transmitting, communi-

cating, or receiving information about the national defense with an intent,

or reason to believe, that the information may be used to the injury of the

United States or to the advantage of any foreign nation. Espionage is a vio-

lation of 18 United States Code sections 792–798 and Article 106, Uniform

Code of Military Justice.4 The CERT Insider Threat Center does work in

that area, but that research is only available to a limited audience. How-

ever, there are many similarities between national security espionage and

all three types of crimes: fraud, theft of intellectual property, and IT sabo-

tage. Therefore, we believe there are many lessons to be learned from these

insider incidents that can be applied to national security espionage as well.

In addition, this book deals primarily with malicious insider threats. We

certainly recognize the importance of unintentional insider threats—

insiders who accidentally affect the confidentiality, availability, or integrity

of an organization’s information or information systems, possibly by being

tricked by an outsider’s use of social engineering. However, we only

recently began researching those types of threats; intentional attacks have

kept us extremely busy for the past ten years! In addition, we believe

that many of the mitigation strategies we advocate for malicious insid-

ers could also be effective against unintentional incidents, as well as those

perpetrated by outsiders. And finally, it is difficult to gather information

regarding unintentional insider threats; because no crime was committed,

organizations tend to handle these incidents quietly, internal to the organi-

zation, if possible.

4. Dictionary of Military and Associated Terms. U.S. Department of Defense, 2005.

ptg7481383

xxii Preface

Finally, we use many case examples from the CERT database throughout

the book. Some of the examples go into greater detail than others; we

include only the details that serve to illustrate the point we are making

in that part in the book. We also have included a large collection of case

examples in Chapter 8, as we believe these will be of great interest to many

of you. Again, we stress that you should use that chapter to examine your

organization and decide if you need to take any proactive measures to

ensure that you do not fall victim to the same types of incidents.

As a matter of policy, we never identify the organizations or insid-

ers involved in our case examples. Some, however, may be apparent

to readers, inasmuch as they are drawn from public records, including

court documents and newspaper accounts. For examples not in the pub-

lic domain, we have further masked the targeted organizations to shield

their identities.

Intended Audience

A common misconception is that insider threat risk management is

the responsibility of IT and information security staff members alone.

Unfortunately, that is one of the biggest reasons that insider attacks con-

tinue to occur, repeating the same patterns we have observed in cases since

1996, the earliest cases in the CERT database. IT and information security

personnel will benefit from reading this book, as we will suggest new

technical controls you can implement using technology you are already

using in the workplace. In addition, this book can be used by technical

staffs to motivate other stakeholders within their organization, since IT and

information security cannot successfully implement an effective insider

threat mitigation strategy on their own.

We wrote this book with a diverse audience in mind. The ideal audience

includes top management, as their support will be needed to implement

the organization-wide insider threat policies, procedures, and technologies

we recommend. It is important that all managers understand the patterns

they need to recognize in their employees, and to advocate up the manage-

ment chain for support for an insider threat program.

For the same reasons, government leaders will benefit from this book,

since they need to support the government-wide insider threat policies,

procedures, and technologies we recommend.

ptg7481383

xxiiiPreface

Human resources personnel need to understand this book, as they are

often the only ones who are aware of indicators of potential increased

risk of insider threats in individual employees. Other staff members who

should understand this information include security, software engineering,

and physical security personnel, as well as data owners. It is also essen-

tial to include your general counsel in any discussions about implementing

technical and nontechnical controls to combat the insider threat, to ensure

compliance with federal, state, and local laws.

In summary, an effective insider threat program requires understanding,

collaboration, and buy-in from across your organization.

Reader Benefits

After reading this book you will realize that the insider threat is real and

the consequences of malicious insider activities can be extremely damag-

ing. Real-life case studies will drive home the point that “this could happen

to me.” Many organizations focus their technical defenses against outsid-

ers attempting to gain unauthorized access. This book emphasizes the need

to balance defense against outsider threats with defense against insider

threats, understanding that insider attacks can be more damaging than out-

sider attacks.

After reading this book you also will be able to recognize the high-level

patterns in the three primary types of insider threats: IT sabotage, theft

of intellectual property, and fraud. In addition, you will understand the

details of how insiders commit those crimes. We present concrete defensive

countermeasures that will help you to defend against insider attacks. You

can compare your own defensive strategies to the controls we propose and

determine whether your existing controls are sufficient to prevent, detect,

and respond to insider attacks like those presented throughout the book.

Once you identify gaps in your defensive posture, you can implement

countermeasures we propose to fill those gaps.

Structure of the Book: Recommendations to Readers

We begin the book in Chapter 1, Overview, by describing the insider threat

problem, and raise awareness to the complexity of the problem— tangential

issues such as insider threats from trusted business partners, malicious

ptg7481383

xxiv Preface

insiders with ties to the Internet underground, and programming

techniques used as an insider attack tool. Next, we provide a breakdown

of the crimes in the CERT database, followed by an overview of the CERT

Insider Threat Center. Because our crime “profiles” or “models” have had

such an impact on the understanding of insider threats, we also provide a

short section describing why those models are so important. We end with

a brief timeline of the evolution of our body of work in the CERT Insider

Threat Center.

It is important that you read the first chapter so that you understand the

concepts and terminology used throughout the remainder of the book.

After that, you can use the book in various ways. If the first chapter has

been an eye-opener for you and you are interested in gaining a compre-

hensive understanding of insider threats, continue reading the book from

beginning to end. However, it is not necessary to read the book in that man-

ner; it is designed such that Chapters 2 through 9 and the appendices can

be used as stand-alone references.

Chapters 2, 3, and 4 are devoted to the three types of insider threats: insider

IT sabotage, theft of intellectual property, and fraud. In each chapter we

describe who commits the crime so that you know which positions within

your organization pose that particular type of threat. We describe the pat-

terns in how each type of crime evolves over time: What motivates the

insider, what behavioral indicators are prevalent, how do they set up and

carry out the crime, when do they do it, whether others are involved, and

so on. We also suggest mitigation strategies throughout each chapter.

We recommend that everyone reads Chapter 2, Insider IT Sabotage, as that

crime has occurred in organizations in every critical infrastructure sector.

Most organizations have some type of intellectual property that must be

protected: strategic or business plans, engineering or scientific information,

source code, and so on. Therefore, it is important that you read Chapter 3,

Insider Theft of Intellectual Property, so that you fully understand who

inside your organization poses a threat to that information.

Chapter 4, Insider Fraud, is applicable to you if you have information or

systems that your employees could use to make extra money on the side.

Credit card information and Personally Identifiable Information (PII) such

as Social Security numbers are valuable for committing various types of

fraud. However, it is also important that you also consider threats posed

by insiders modifying information for financial gain. Do you have systems

that outsiders would be willing to pay your employees to manipulate? Or

ptg7481383

xxvPreface

do you have systems that your employees could illicitly use for personal

financial gain, perhaps by colluding with other employees? If so, Chapter 4

is applicable to you. Note that Chapter 4 also describes the insider threats

in the CERT database involving organized crime, as all of those crimes

were fraud.

Chapter 5, Insider Threat Issues in the Software Development Life Cycle,

explores said issues. The Software Development Life Cycle (SDLC) is syn-

onymous with “software process” as well as “software engineering”; it is

a structured methodology used in the development of software products

and packages. This methodology is used from the conception phase to the

delivery and end of life of a final software product.5 We explore each phase

of the SDLC and the types of insider threats that need to be considered

at each phase. In addition, we describe how oversights at various phases

have resulted in system vulnerabilities that have enabled insider threats

to be carried out later by others, often by end users of the system. If your

organization develops software, you should carefully consider the lessons

learned in this chapter. It should make you look differently at the entire

SDLC: from how to consider potential insider threats in the requirements

and design phases, to potential threats posed by developers in the imple-

mentation and maintenance phases.

If you are looking for information on mitigation strategies, go to Chapters 6

and 7. You can use Chapter 6, Best Practices for the Prevention and Detection

of Insider Threats, to compare best practices for prevention and detection

of insider threats to your organization’s practices. Many of the best prac-

tices were described in previous chapters, but Chapter 6 summarizes all

of the suggestions in a stand-alone reference. This chapter is based on our

“Common Sense Guide to Prevention and Detection of Insider Threats,”

for years one of the top downloads on the entire CERT Web site.

If you are in a technical security role and would like more detailed infor-

mation on new controls you can implement, you should read Chapter 7,

Technical Insider Threat Controls. This chapter describes the technical solu-

tions we have developed in the CERT insider threat lab. These technical

solutions are based on technologies that you most likely are already using

for technical security. We provide new signatures, rules, and configurations

for using them for more effective detection of insider threats.

5. Whatis.com

ptg7481383

xxvi Preface

Chapter 8, Case Examples, contains a collection of case examples from the

CERT database. We provide a summary table at the beginning of the chapter

so that you can reference specific cases by type of crime, sector of the orga-

nization, and brief summary of the crime. Many people have requested this

type of information from us over the years, so we believe this will provide

enormous value to many of you. We highly recommend that you review

these cases and consider your vulnerability to the same type of malicious

actions within your organization. Chapter 8 is also of value to researchers

who might want to use case examples for their own research.

Chapter 9, Conclusion and Miscellaneous Issues, contains a final collec-

tion of miscellaneous information that didn’t fit anywhere else in the book.

For example, we provide an analysis of insiders with connections to the

Internet underground. We also provide details on insiders who attacked

not their own organization, but trusted business partners that had a formal

relationship with their employer.

After the chapters, we provide a series of appendices.

Appendix A, Insider Threat Center Products and Services, contains infor-

mation on products and services provided by the CERT Insider Threat

Center, including insider threat assessments, workshops, online exercises,

and technical controls. We also discuss sponsored research opportunities

for the Insider Threat Center. If you are extremely concerned about insider

threats and want immediate assistance from the CERT Program, be sure to

read this appendix.

Appendix B, Deeper Dive into the Data, contains interesting data mined

from the CERT database.

Appendix C, CyberSecurity Watch Survey, contains data collected from the

CyberSecurity Watch Survey, an annual survey we conduct in conjunction

with CSO Magazine and the Secret Service.6

Appendix D, Insider Threat Database Structure, contains the database

structure for the CERT database. If you are interested in exactly what kind

of data we track for each case, you should read this appendix. Also, we

frequently respond to queries to mine the CERT database for interesting

data—if you see a field or fields you would like us to explore with you,

please contact us. We can be reached via email at insider-threat-feedback@

cert.org.

Appendix E, Insider Threat Training Simulation: MERIT InterActive,

contains detailed information about an interactive virtual simulation we

6. Note that in some years Deloitte and Microsoft also participated in the survey.

ptg7481383

xxviiPreface

developed for insider threat training. It is basically a prototype of a video

game for insider threat training. What do you need for a successful video

game? Good guys playing against the bad guys, complex plots, interesting

characters—that’s insider threat! We didn’t want to distract you with that

information in the body of the book, but some of you might find it interest-

ing, so we included it in this appendix. In addition, if you are interested in

new and innovative training methods, this appendix should be of interest.

Appendix F, System Dynamics Background, provides background informa-

tion on system dynamics.7 We provide brief references to system dynamics

throughout the book, but it is not necessary that you understand system

dynamics when you read the book. Nonetheless, we wanted to provide

more in-depth information for those of you who wish to learn more.

Finally, the book concludes with references, a glossary, and a complete

index.

Note that the accompanying Web site, www.cert.org/insider_threat, con-

tains our system dynamics models for use by other researchers. It is also

updated regularly with new insider threat controls, best practices, and case

examples.

In summary, the book is intended to be a reference for many different types

of readers. It contains the entire CERT Insider Threat Center body of knowl-

edge on insider threats, and therefore can be used as a reference for raising

awareness, informing your risk management processes, designing and

implementing new technical and nontechnical controls, and much more.

About the CERT Program

The CERT Program is part of the Software Engineering Institute (SEI), a

federally funded research and development center at Carnegie Mellon

University in Pittsburgh. Following the Morris worm incident, which

brought 10% of Internet systems to a halt in November 1988, the Defense

Advanced Research Projects Agency (DARPA) charged the SEI with setting

up a center to coordinate communication among experts during security

emergencies and to help prevent future incidents. This center was named

the CERT Coordination Center (CERT/CC).

7. “System dynamics is a computer-aided approach to policy analysis and design. It applies to dynamic

problems arising in complex social, managerial, economic, or ecological systems—literally any dynamic

systems characterized by interdependence, mutual interaction, information feedback, and circular cau-

sality” (www.systemdynamics.org/what_is_system_dynamics.html).

ptg7481383

xxviii Preface

While we continue to respond to major security incidents and analyze

product vulnerabilities, our role has expanded over the years. Along

with the rapid increase in the size of the Internet and its use for critical

functions, there have been progressive changes in intrusion techniques,

increased amounts of damage, increased difficulty of detecting an attack,

and increased difficulty of catching the attackers. To better manage these

changes, the CERT/CC is now part of the larger CERT Program, which

develops and promotes the use of appropriate technology and systems

management practices to resist attacks on networked systems, to limit

damage, and to ensure continuity of critical services.

The CERT Insider Threat Center

The objective of the CERT Insider Threat Center is to assist organizations

in preventing, detecting, and responding to insider compromises. We have

been researching this problem since 2001 in partnership with the DOD,

the U.S. Department of Homeland Security (DHS), other federal agencies,

federal law enforcement, the intelligence community, private industry,

academia, and the vendor community. The foundation of our work is

the CERT database of more than 700 insider threat cases. We use system

dynamics modeling to characterize the nature of the insider threat prob-

lem, explore dynamic indicators of insider threat risk, and identify and

experiment with administrative and technical controls for insider threat

mitigation. The CERT insider threat lab provides a foundation to iden-

tify, tune, and package technical controls as an extension of our modeling

efforts. We have developed an assessment framework based on the fraud,

theft of intellectual property, and IT sabotage case data that we have used

to assist organizations in identifying their technical and nontechnical vul-

nerabilities to insider threats, as well as executable countermeasures. The

CERT Insider Threat Center is uniquely positioned as a trusted broker to

assist the community in the short term, and through our ongoing research.

Dawn Cappelli and Andy Moore have been working on CERT insider

threat research since 2001, and Randy Trzeciak joined the team in 2006.

Dawn is the technical manager of the CERT Insider Threat Center, Andy

is the lead researcher, and Randy is the technical lead for insider threat

research. Although our insider threat team has now grown into an official

Insider Threat Center, for many years the CERT Program’s insider threat

team consisted of Andy, Randy, and Dawn, which is why we decided to

team up and capture our history in this book.

ptg7481383

xxixPreface

Summary

The purpose of this book is to raise awareness of the insider threat issue

from the ground up: staff members in IT, information security, and human

resources; data owners; and physical security, software engineering,

legal, and other security personnel. We strongly believe after studying

this problem for more than a decade that in order to effectively mitigate

insider threats it takes common understanding, support, and commu-

nication from all of those people across the organization. In addition,

buy-in is needed from upper management, as they will need to support

the cross-organizational communication required to formulate an effective

mitigation strategy. And finally, it requires awareness and consideration by

government leaders, as some of the issues are even larger than individual

organizations. Employee privacy issues and mergers and acquisitions with

organizations outside the United States are two such examples.

This book covers our extensive work in studying insider IT sabotage, theft

of intellectual property, and fraud. Although it does not deal explicitly with

insiders who committed national security espionage, many of the lessons

in this book are directly applicable to that domain as well.

Most of the book can be read and easily understood by technical and non-

technical readers alike. The only exception is Chapter 7. If you are not a

“technical” person you are best off skipping this chapter. However, we

strongly suggest you lend the book to your technical security staff so that

they can consider implementing these controls.

Now that you understand the purpose of the book and its contents, we will

begin to dig a little deeper into each type of insider crime, our modeling of

insider threats, and the CERT Insider Threat Center in Chapter 1. We rec-

ommend that you read that chapter next so that you understand the basic

concepts. After completing Chapter 1 you will have the foundation you

need so that you can explore the rest of the book in any order you wish!

ptg7481383

This page intentionally left blank

ptg7481383

xxxi

Acknowledgments

We would like to start by thanking our amazing team at the CERT Insider

Threat Center. This book represents the hard work of many brilliant peo-

ple. First, thank you to our current team in the Insider Threat Center, listed

here in the order in which they joined the team: Adam Cummings, Mike

Hanley, Derrick Spooner, Chris King, Joji Montelibano, Cindy Nesta, Josh

Burns, George Silowash, and Dr. Bill Claycomb. And a special thank you to

Tara Sparacino and Cindy Walpole, who helped us to keep our heads above

water at work while we wrote this book in our “spare time.” The CERT

Insider Threat Center is part of the Enterprise Threat and Vulnerability

Management (ETVM) team in the CERT Program. The ETVM team is

a very tight-knit group, and we would be remiss if we did not acknowl-

edge these awesome, dedicated technical security experts, again listed in

the order in which they started on the team: Georgia Killcrece (retired, but

sorely missed!), Robin Ruefle, Mark Zajicek, David Mundie, Becky Cooper,

Charlie Ryan, Russ Griffin, Sandi Behrens, Alex Nicoll, Sam Perl, and Kristi

Keeler.

Thank you to the current and former CMU/SEI/CERT staff members

who have participated in our insider threat work over the years: Chris

Bateman, Sally Cunningham, Casey Dunlevy, Rob Floodeen, Carly Huth,

Dr. Joseph (“Jay”) Kadane, Greg Longo, David McIntire, David Mundie,

Dr. Dan Phelps, Stephanie Rogers, Dr. Greg Shannon, Dr. Tim Shimeall,

Rhiannon Weaver, Pam Williams, Bradford Willke, and Mark Zajicek. And

a special thank you to Dr. Tom Longstaff, who was the CERT technical

manager for the original Insider Threat Study, and worked on the CERT

Program’s original insider threat collaboration with the U.S. Department of

Defense (DOD) Personnel Security Research Center.

Thank you to the many fabulous graduate students who have worked on

our insider threat projects throughout the years, starting with our two cur-

rent students: Todd Lewellen, Lynda Pillage, Jen Stanley, Chase Midler,

Andrew Santell, Luke Hogan, Jaime Tupino, Tyler Dean, Will Schroeder,

ptg7481383

xxxii Acknowledgments

Matt Houy, Bob Weiland, Devon Rollins, Tom Caron, John Wyrick,

Christopher Nguyen, Hannah Joseph, and Akash Desai. Many of those stu-

dents were from the Scholarship for Service Program—we commend the

U.S. federal government for this program, which produces the most out-

standing talent in the cybersecurity field.

A special thank you to Dr. Eric Shaw, who has been a Visiting Scientist in

the CERT Program and a clinical psychologist at Consulting & Clinical

Psychology, Ltd. Eric has been the guiding force in the psychological

aspects of our research since the conclusion of our first Insider Threat Study

with the Secret Service National Threat Assessment Center.

Thank you to Noopur Davis, Claude Williams, and Dr. Marvine Hamner,

who worked for us as visiting scientists.

Thank you to the CERT Program’s director, Rich Pethia, and deputy direc-

tor, Bill Wilson, who have given us the autonomy and authority over the

past decade to take our research in so many exciting directions. Thank you

to our retired boss, Dr. Barbara Laswell, who helped us evolve from the

Insider Threat Team of three people into the CERT Insider Threat Center.

Thank you to SEI Director Dr. Paul Neilson and Deputy Director Clyde

Chittister, for their support and recognition. We’re extremely grateful to

Terry Roberts for the visibility she has brought to our work. And thank you

to Dr. Angel Jordan, former provost of Carnegie Mellon University, who

has been an advocate for our work over the years.

We would like to thank the Secret Service, our original partner in this quest

to understand and help organizations protect themselves from malicious

insider attacks. Thank you to National Threat Assessment Center (NTAC)

staff members who participated on the project, especially research coordi-

nator Dr. Marisa Reddy Randazzo, who founded and directed the Insider

Threat Study within NTAC; Dr. Michelle Keeney, who took over when

Marisa left; Eileen Kowalski, who was the lynchpin throughout the project;

and Matt Doherty, the Special Agent in Charge of NTAC. Also, thank you

to Jim Savage, the sponsor of our original work with the Secret Service.

Finally, a big thank you to our Secret Service liaisons for the Insider Threat

Study, who moved to Pittsburgh and joined the CERT Program for a few

years: Cornelius Tate, Dave Iacovetti, and Wayne Peterson. What great