CCNA Cyber Ops SECFND #210 250 Official Cert Guide 210

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 946 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- About This E-Book

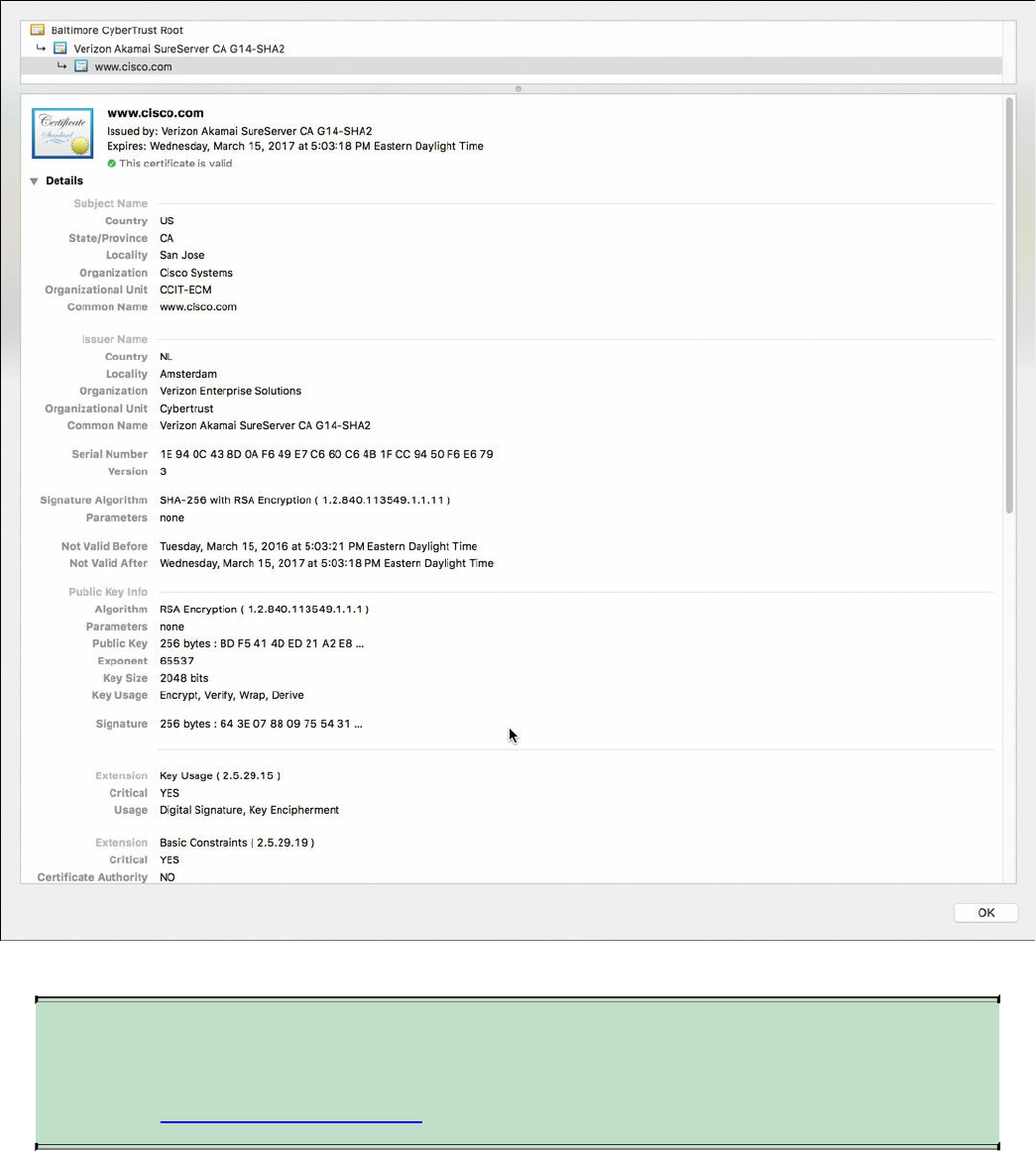

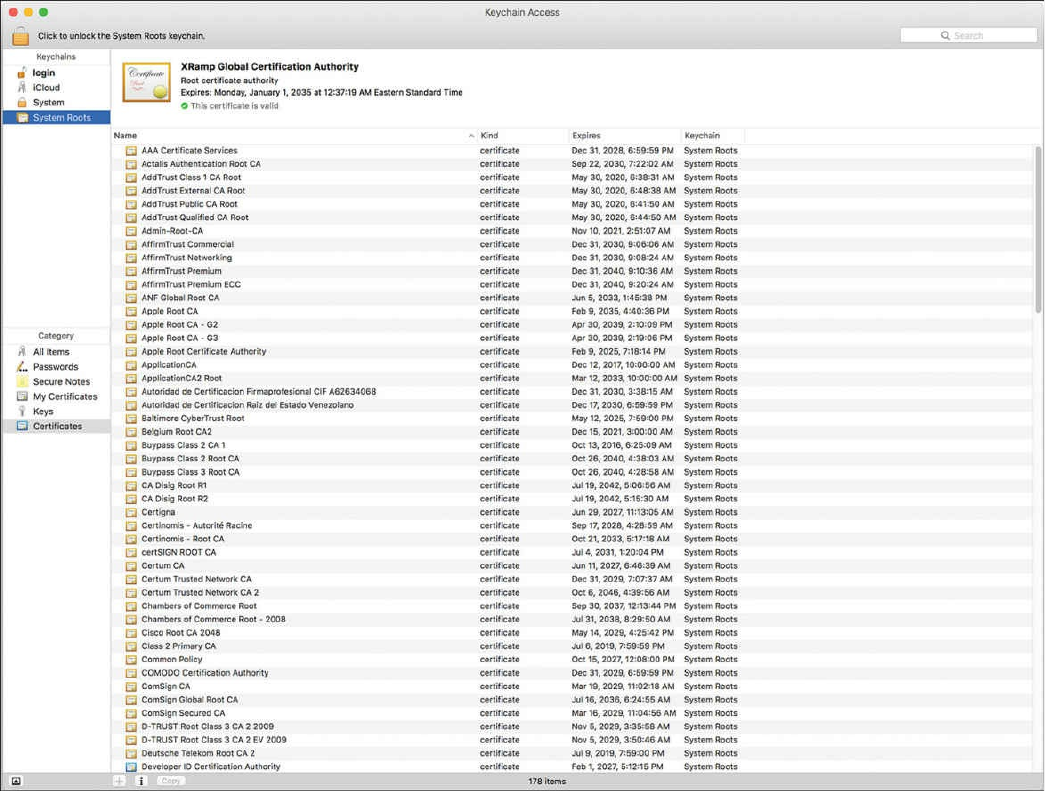

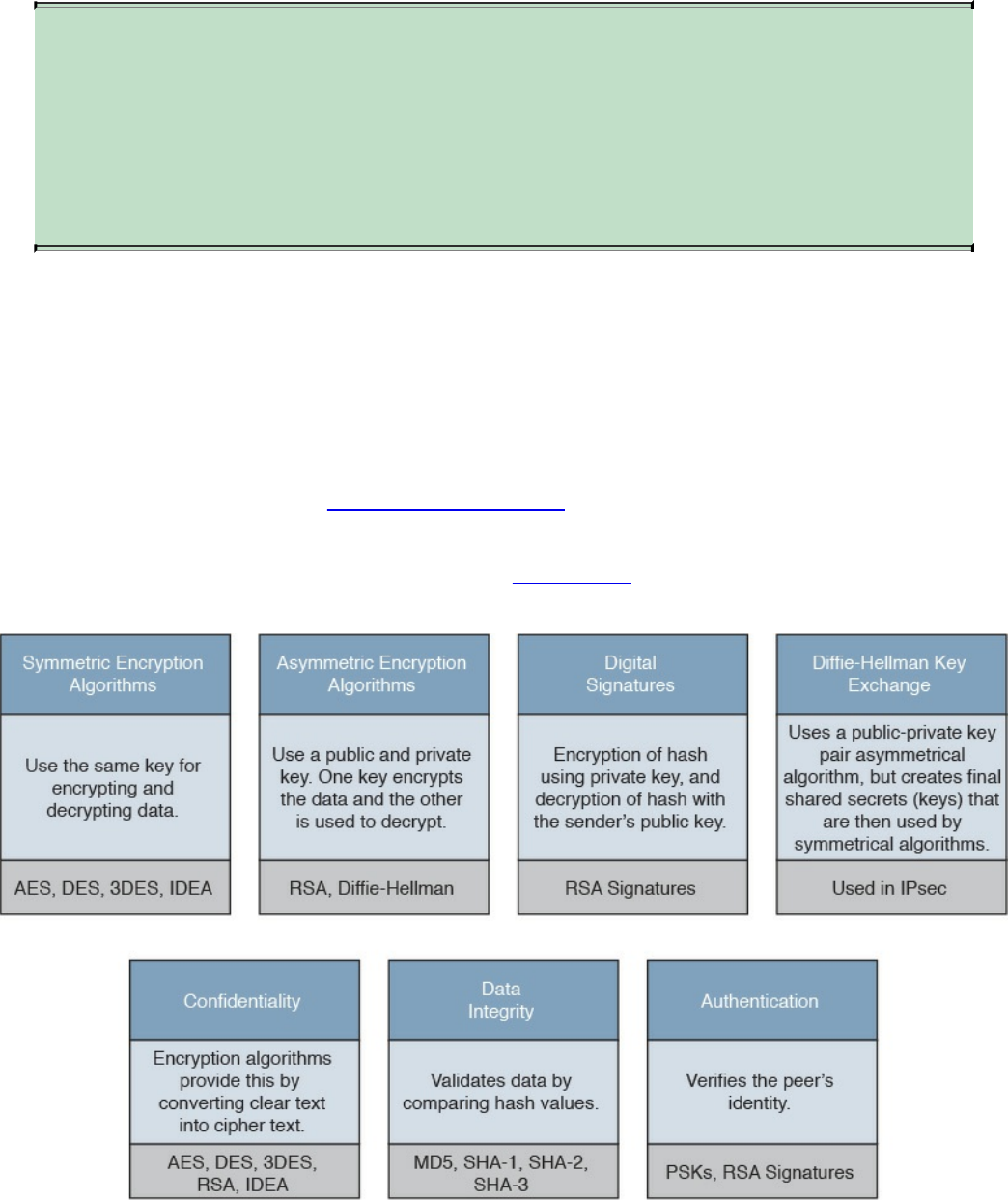

- Title Page

- Copyright Page

- About the Authors

- About the Technical Reviewers

- Dedications

- Acknowledgments

- Contents at a Glance

- Contents

- Command Syntax Conventions

- Introduction

- Part I: Network Concepts

- Part II: Security Concepts

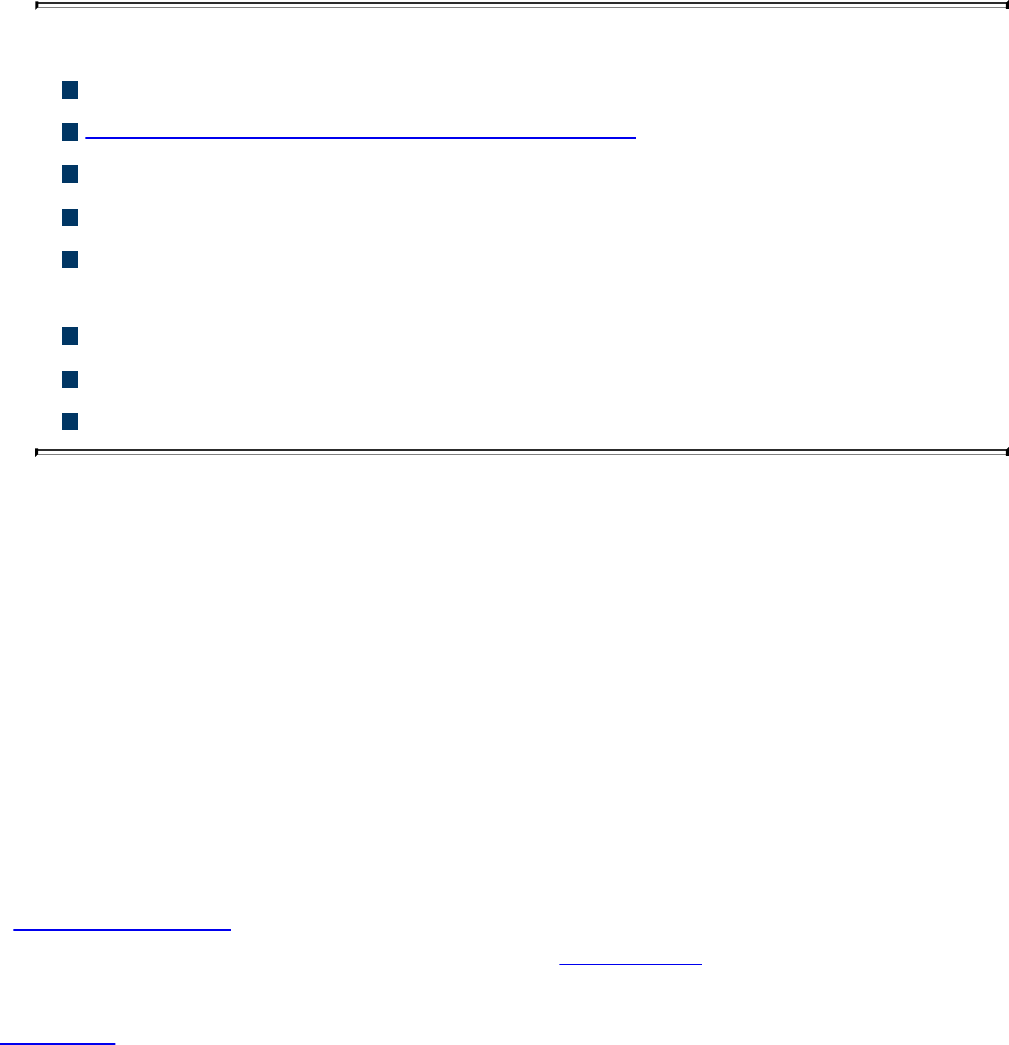

- Chapter 3. Security Principles

- “Do I Know This Already?” Quiz

- Foundation Topics

- The Principles of the Defense-in-Depth Strategy

- What Are Threats, Vulnerabilities, and Exploits?

- Confidentiality, Integrity, and Availability: The CIA Triad

- Risk and Risk Analysis

- Personally Identifiable Information and Protected Health Information

- Principle of Least Privilege and Separation of Duties

- Security Operation Centers

- Forensics

- Exam Preparation Tasks

- Chapter 4. Introduction to Access Controls

- Chapter 5. Introduction to Security Operations Management

- Chapter 3. Security Principles

- Part III: Cryptography

- Part IV: Host-Based Analysis

- Part V: Security Monitoring and Attack Methods

- Chapter 11. Network and Host Telemetry

- Chapter 12. Security Monitoring Operational Challenges

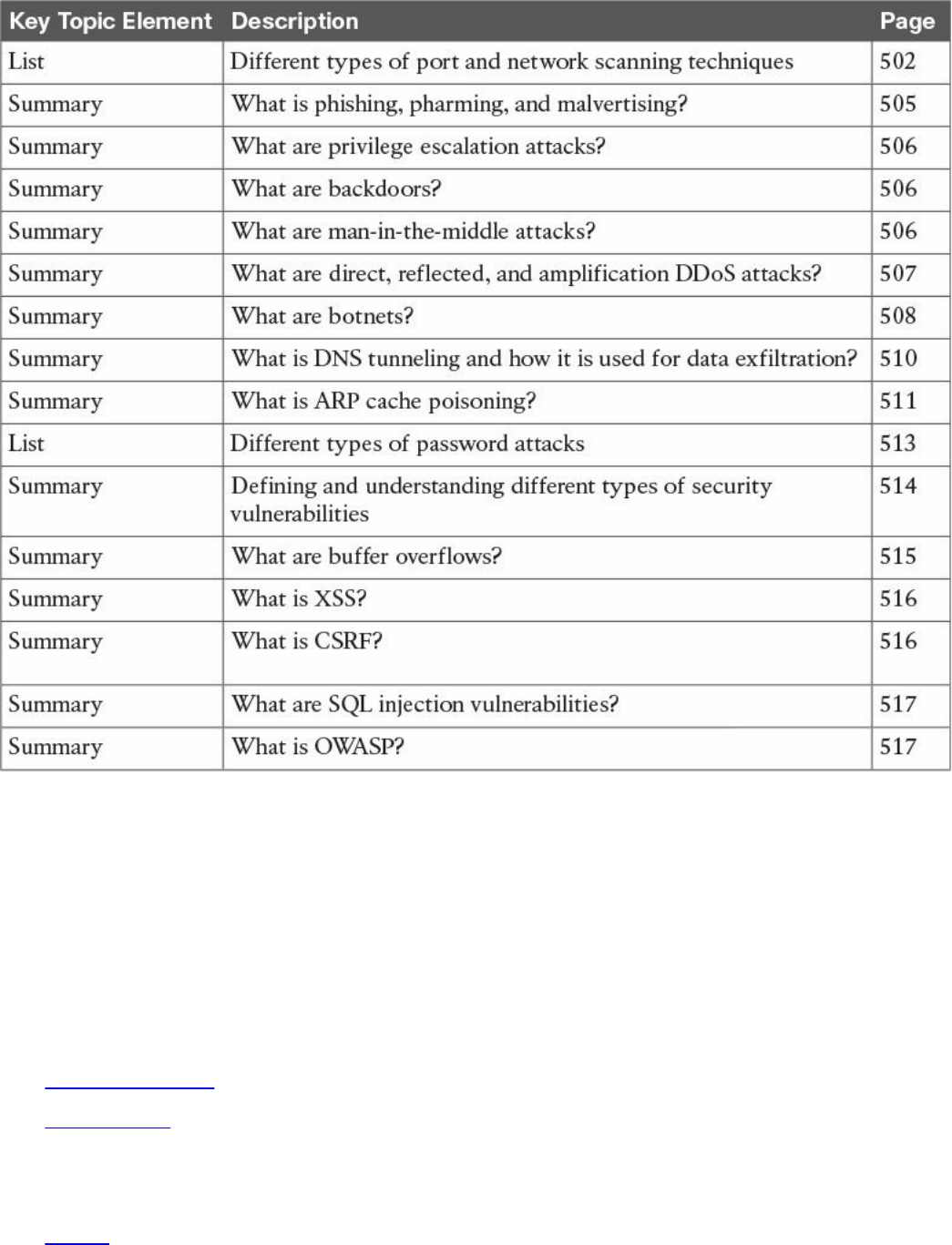

- Chapter 13. Types of Attacks and Vulnerabilities

- Chapter 14. Security Evasion Techniques

- Part VI: Final Preparation

- Part VII: Appendixes

- Glossary

- Index

- Elements Available on the Book Website

- Inside Back Cover

- Inside Front Cover

- Access Card

- Where are the companion content files?

- Code Snippets

About This E-Book

EPUB is an open, industry-standard format for e-books. However, support for EPUB

and its many features varies across reading devices and applications. Use your device

or app settings to customize the presentation to your liking. Settings that you can

customize often include font, font size, single or double column, landscape or portrait

mode, and figures that you can click or tap to enlarge. For additional information about

the settings and features on your reading device or app, visit the device manufacturer’s

Web site.

Many titles include programming code or configuration examples. To optimize the

presentation of these elements, view the e-book in single-column, landscape mode and

adjust the font size to the smallest setting. In addition to presenting code and

configurations in the reflowable text format, we have included images of the code that

mimic the presentation found in the print book; therefore, where the reflowable format

may compromise the presentation of the code listing, you will see a “Click here to view

code image” link. Click the link to view the print-fidelity code image. To return to the

previous page viewed, click the Back button on your device or app.

www.hellodigi.ir

CCNA

Cyber

Ops

SECFND

210-250

Official

Cert

Guide

Omar

Santos

Joseph

Muniz

Stefano

De

Crescenzo

Copyright

©

2017

Pearson

Education,

Inc,

Published

by:

Cisco

Press

800

East

96th

Street

Indianapolis,

IN

46240

USA

All

rights

reserved.

No

part

of

this

book

may

be

reproduced

or

transmitted

in

any

form

or

by

any

means,

electronic

or

mechanical,

including

photocopying,

recording,

or

by

any

information

storage

and

retrieval

system,

without

written

permission

from

the

publisher,

except

for

the

inclusion

of

brief

quotations

in

a

review.

Printed in the United States of America

1 17

Library of Congress Control Number: 2017931952

ISBN-10: 1-58714-702-5

ISBN-13: 978-1-58714-702-9

Warning and Disclaimer

This book is designed to provide information about the CCNA Cyber Ops SECFND

#210-250 exam. Every effort has been made to make this book as complete and accurate

as possible, but no warranty or fitness is implied.

The information is provided on an “as is” basis. The authors, Cisco Press, and Cisco

Systems, Inc., shall have neither liability nor responsibility to any person or entity with

respect to any loss or damages arising from the information contained in this book or

from the use of the discs or programs that may accompany it.

The opinions expressed in this book belong to the authors and are not necessarily those

of Cisco Systems, Inc.

Editor-in-Chief: Mark Taub

Product Line Manager: Brett Bartow

Managing Editor: Sandra Schroeder

Development Editor: Christopher Cleveland

Project Editor: Mandie Frank

www.hellodigi.ir

Composition: Tricia Bronkella

Indexer: Ken Johnson

Alliances Manager, Cisco Press: Ron Fligge

Executive Editor: Mary Beth Ray

Technical Editors: Pavan Reddy, Ron Taylor

Copy Editor: Bart Reed

Designer: Chuti Prasertsith

Editorial Assistant: Vanessa Evans

Proofreader: The Wordsmithery LLC

Trademark Acknowledgments

All terms mentioned in this book that are known to be trademarks or service marks have

been appropriately capitalized. Cisco Press or Cisco Systems, Inc., cannot attest to the

accuracy of this information. Use of a term in this book should not be regarded as

affecting the validity of any trademark or service mark.

Special Sales

For information about buying this title in bulk quantities, or for special sales

opportunities (which may include electronic versions; custom cover designs; and

content particular to your business, training goals, marketing focus, or branding

interests), please contact our corporate sales department at corpsales@pearsoned.com

or (800) 382-3419.

For government sales inquiries, please contact governmentsales@pearsoned.com.

For questions about sales outside the United States, please contact intlcs@pearson.com.

Feedback Information

At Cisco Press, our goal is to create in-depth technical books of the highest quality and

value. Each book is crafted with care and precision, undergoing rigorous development

that involves the unique expertise of members from the professional technical

community.

Readers’ feedback is a natural continuation of this process. If you have any comments

regarding how we could improve the quality of this book, or otherwise alter it to better

suit your needs, you can contact us through email at feedback@ciscopress.com. Please

make sure to include the book title and ISBN in your message.

We greatly appreciate your assistance.

www.hellodigi.ir

Americas Headquarters

Cisco Systems. Inc.

San Jose, CA

Asia Pacific Headquarters

Cisco Systems (USA) Pte. Ltd.

Singapore

Europe Headquarters

Cisco Systems International BV

Amsterdam, The Netherlands

Cisco has more than 200 offices worldwide. Addresses, phone numbers, and fax

numbers are listed on the Cisco Website at www.cisco.com/go/offices.

CCDE, CCENT, Cisco Eos, Cisco HealthPresence, the Cisco logo, Cisco Lumin, Cisco

Nexus, Cisco StadiumVision, Cisco Telepresence, Cisco WebEx, DCE, and Welcome to

the Human Network are trademarks; Changing the Way We Work, Live, Play, and Learn

and Cisco Store are service marks; and Access Registrar, Aironet, AsyncOS, Bringing

the Meeting To You, Catalyst, CCDA, CCDP, CCIE, CCIP, CCNA, CCNP, CCSP,

CCVP, Cisco, the Cisco Certified Internetwork Expert logo, Cisco IOS, Cisco Press,

Cisco Systems, Cisco Systems Capital, the Cisco Systems logo, Cisco Unity,

Collaboration Without Limitation, EtherFast, EtherSwitch, Event Center, Fast Step,

Follow Me Browsing, FormShare, GigaDrive, HomeLink, Internet Quotient, IOS,

iPhone, iQuick Study, IronPort, the IronPort logo, LightStream, Linksys, MediaTone,

MeetingPlace, MeetingPlace Chime Sound, MGX, Networkers, Networking Academy,

Network Registrar, PCNow, PIX, PowerPanels, ProConnect, ScriptShare, SenderBase,

SMARTnet, Spectrum Expert, StackWise, The Fastest Way to Increase Your Internet

Quotient, TransPath, WebEx, and the WebEx logo are registered trademarks of Cisco

Systems, Inc. and/or its affiliates in the United States and certain other countries.

All other trademarks mentioned in this document or website are the property of their

respective owners. The use of the word partner does not imply a partnership

relationship between Cisco and any other company. (0812R)

www.hellodigi.ir

About the Authors

Omar Santos is an active member of the cyber security community, where he leads

several industry-wide initiatives and standards bodies. His active role helps

businesses, academic institutions, state and local law enforcement agencies, and other

participants dedicated to increasing the security of their critical infrastructures.

Omar is the author of over a dozen books and video courses, as well as numerous white

papers, articles, and security configuration guidelines and best practices. Omar is a

principal engineer of the Cisco Product Security Incident Response Team (PSIRT),

where he mentors and leads engineers and incident managers during the investigation

and resolution of cyber security vulnerabilities. Additional information about Omar’s

current projects can be found at omarsantos.io, and you can follow Omar on Twitter

@santosomar.

Joseph Muniz is an architect at Cisco Systems and security researcher. He has

extensive experience in designing security solutions and architectures for the top

Fortune 500 corporations and the U.S. government. Joseph’s current role gives him

visibility into the latest trends in cyber security, from both leading vendors and

customers. Examples of Joseph’s research include his RSA talk titled “Social Media

Deception,” which has been quoted by many sources (search for “Emily Williams

Social Engineering”), as well as his articles in PenTest Magazine regarding various

security topics.

Joseph runs The Security Blogger website, a popular resource for security, hacking, and

product implementation. He is the author and contributor of several publications

covering various penetration testing and security topics. You can follow Joseph at

www.thesecurityblogger.com and @SecureBlogger.

Stefano De Crescenzo is a senior incident manager with the Cisco Product Security

Incident Response Team (PSIRT), where he focuses on product vulnerability

management and Cisco products forensics. He is the author of several blog posts and

white papers about security best practices and forensics. He is an active member of the

security community and has been a speaker at several security conferences.

Stefano specializes in malware detection and integrity assurance in critical

infrastructure devices, and he is the author of integrity assurance guidelines for Cisco

IOS, IOS-XE, and ASA.

Stefano holds a B.Sc. and M.Sc. in telecommunication engineering from Politecnico di

Milano, Italy, and an M.Sc. in telecommunication from Danish Technical University,

Denmark. He is currently pursuing an Executive MBA at Vlerick Business School in

Belgium. He also holds a CCIE in Security #26025 and is CISSP and CISM certified.

www.hellodigi.ir

www.hellodigi.ir

About the Technical Reviewers

Pavan Reddy serves as a Security Principal in Cisco Security Services. Pavan has 20+

years of security and network consulting experience in Financial Services, Healthcare,

Service Provider, and Retail arenas. Recent projects cover Technical Security Strategy

and Architecture, Network Segmentation Strategy, Threat Intelligence Analytics,

Distributed Denial-of-Service Mitigation Architectures, and DNS Architecture and

Security. Pavan holds multiple CCIEs and BS in Computer Engineering.

Ron Taylor has been in the Information Security field for almost 20 years. Ten of those

years were spent in consulting where he gained experience in many areas. In 2008, he

joined the Cisco Global Certification Team as an SME in Information Assurance. In

2012, he moved into a position with the Security Research & Operations group

(PSIRT), where his focus was mostly on penetration testing of Cisco products and

services. He was also involved in developing and presenting security training to

internal development and test teams globally. Additionally, he provided consulting

support to many product teams as an SME on product security testing. In his current

role, he is a Consulting Systems Engineer specializing in Cisco’s security product line.

Certifications include GPEN, GWEB, GCIA, GCIH, GWAPT, RHCE, CCSP, CCNA,

CISSP, and MCSE. Ron is also a Cisco Security Blackbelt, SANS mentor, Cofounder

and President of the Raleigh BSides Security Conference, and a member of the Packet

Hacking Village team at Defcon.

www.hellodigi.ir

Dedications

I would like to dedicate this book to my lovely wife, Jeannette, and my two beautiful

children, Hannah and Derek, who have inspired and supported me throughout the

development of this book.

I also dedicate this book to my father, Jose, and to the memory of my mother, Generosa.

Without their knowledge, wisdom, and guidance, I would not have the goals that I strive

to achieve today.

—Omar Santos

I would like to dedicate this book to the memory of my father, Raymond Muniz. He

never saw me graduate from college or accomplish great things, such as writing this

book. I would also like to apologize to him for dropping out of soccer in high school. I

picked it back up later in life, and today play in at least two competitive matches a

week. Your hard work paid off. Hopefully you somehow know that.

—Joseph Muniz

This book is dedicated to my wife, Nevena, and my beautiful daughters, Sara and Tea,

who supported and inspired me during the development of this book. Specifically, Tea

was born a few weeks before I started writing my first chapter, so she is especially

connected with this book.

I would also like to mention my whole family: my mother, Mariagrazia, and my sister,

Francesca, who supported my family and me while I was away writing. I also dedicate

this book to the memory of my father, Cataldo.

—Stefano De Crescenzo

www.hellodigi.ir

Acknowledgments

I would like to thank the technical editors, Pavan Reddy and Ron Taylor, for their time

and technical expertise. They verified our work and contributed to the success of this

book. I would also like to thank the Cisco Press team, especially Mary Beth Ray,

Denise Lincoln, and Christopher Cleveland, for their patience, guidance, and

consideration. Their efforts are greatly appreciated. Finally, I would like to

acknowledge the Cisco Security Research and Operations teams, Cisco Advanced

Threat Analytics, and Cisco Talos. Several leaders in the network security industry

work there, supporting our Cisco customers, often under very stressful conditions, and

working miracles daily. They are truly unsung heroes, and I am honored to have had the

privilege of working side by side with them in the trenches while protecting customers

and Cisco.

—Omar Santos

I would first like to thank Omar and Stefano for including me on this project. I really

enjoyed working with these guys and hope we can do more in the future. I also would

like to thank the Cisco Press team and technical editors, Pavan Reddy and Ron Taylor,

for their fantastic support in making the writing process top quality and easy for

everybody. Hey, Ron, you got this and the CTR comic. 2016 was great for you, Mr.

Green.

I would also like to thank all the great people in my life who make me who I am.

Finally, a message for Raylin Muniz (age 7): Hopefully one day you can accomplish

your dreams like I have with this book.

—Joseph Muniz

I would like to thank Omar and Joey for being fantastic mates in the development of this

book. A special mention goes to my wife as well, for supporting me throughout this

journey and for helping me by reviewing my work.

Additionally, this book wouldn’t have been possible without the help of the Cisco Press

team and in particular of Chris Cleveland. His guidance has been very precious. A big

thanks goes to the technical reviewers, Pavan and Ron. Thanks for keeping me honest

and to the point! A big thanks also to Eric Vyncke for his numerous suggestions.

—Stefano De Crescenzo

www.hellodigi.ir

Contents at a Glance

Introduction

Part I Network Concepts

Chapter 1 Fundamentals of Networking Protocols and Networking Devices

Chapter 2 Network Security Devices and Cloud Services

Part II Security Concepts

Chapter 3 Security Principles

Chapter 4 Introduction to Access Controls

Chapter 5 Introduction to Security Operations Management

Part III Cryptography

Chapter 6 Fundamentals of Cryptography and Public Key Infrastructure (PKI)

Chapter 7 Introduction to Virtual Private Networks (VPNs)

Part IV Host-Based Analysis

Chapter 8 Windows-Based Analysis

Chapter 9 Linux- and Mac OS X–Based Analysis

Chapter 10 Endpoint Security Technologies

Part V Security Monitoring and Attack Methods

Chapter 11 Network and Host Telemetry

Chapter 12 Security Monitoring Operational Challenges

Chapter 13 Types of Attacks and Vulnerabilities

Chapter 14 Security Evasion Techniques

Part VI Final Preparation

Chapter 15 Final Preparation

Part VII Appendixes

Appendix A Answers to the “Do I Know This Already?” Quizzes and Q&A Questions

Glossary

Index

Elements Available on the Book Website

www.hellodigi.ir

Contents

Introduction

Part I Network Concepts

Chapter 1 Fundamentals of Networking Protocols and Networking Devices

“Do I Know This Already?” Quiz

Foundation Topics

TCP/IP and OSI Model

TCP/IP Model

TCP/IP Model Encapsulation

Networking Communication with the TCP/IP Model

Open System Interconnection Model

Layer 2 Fundamentals and Technologies

Ethernet LAN Fundamentals and Technologies

Ethernet Physical Layer

Ethernet Medium Access Control

Ethernet Frame

Ethernet Addresses

Ethernet Devices and Frame-Forwarding Behavior

LAN Hubs and Bridges

LAN Switches

Link Layer Loop and Spanning Tree Protocols

Virtual LAN (VLAN) and VLAN Trunking

Cisco VLAN Trunking Protocol

Inter-VLAN Traffic and Multilayer Switches

Wireless LAN Fundamentals and Technologies

802.11 Architecture and Basic Concepts

802.11 Frame

WLAN Access Point Types and Management

Internet Protocol and Layer 3 Technologies

IPv4 Header

www.hellodigi.ir

IPv4 Fragmentation

IPv4 Addresses and Addressing Architecture

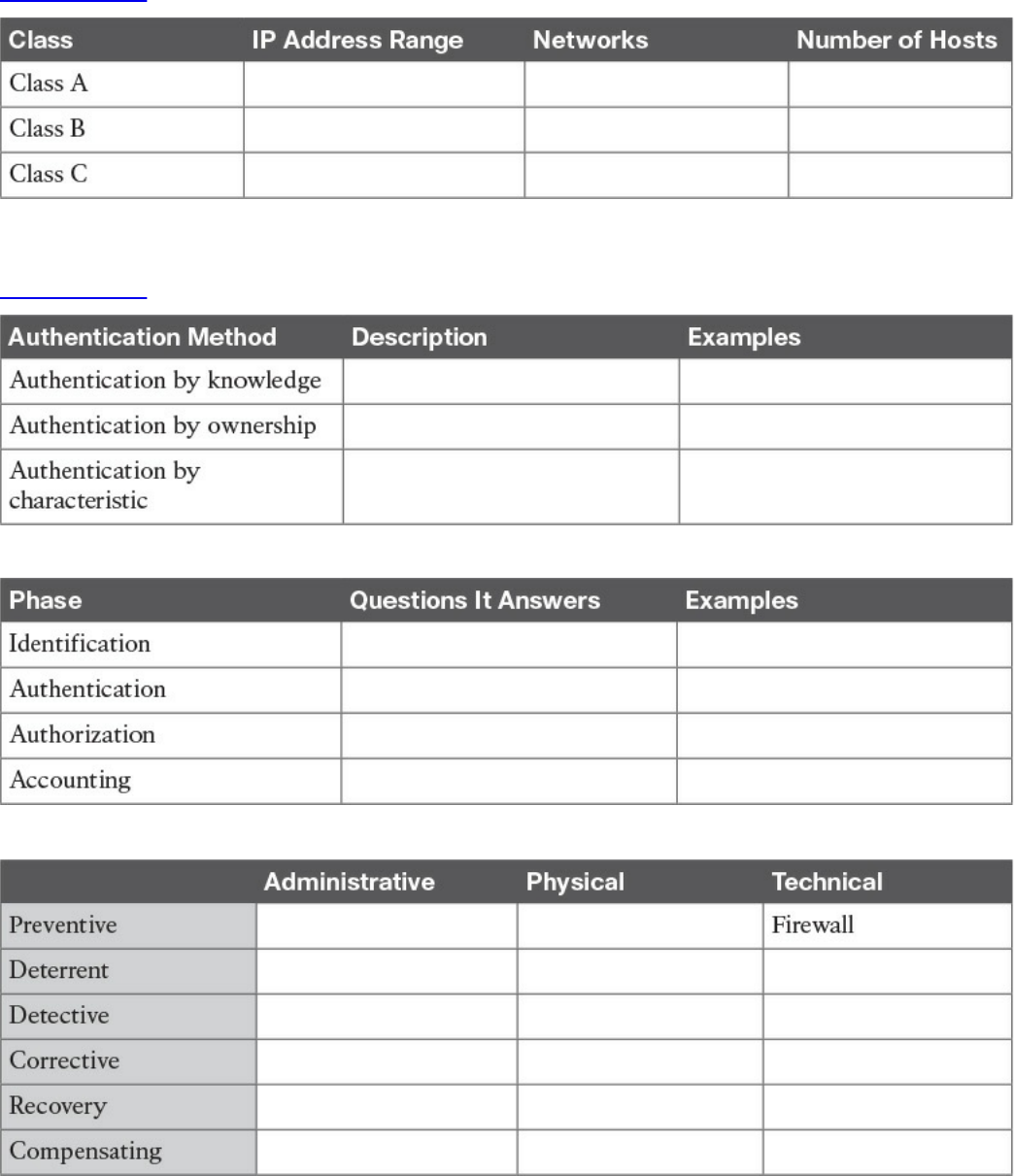

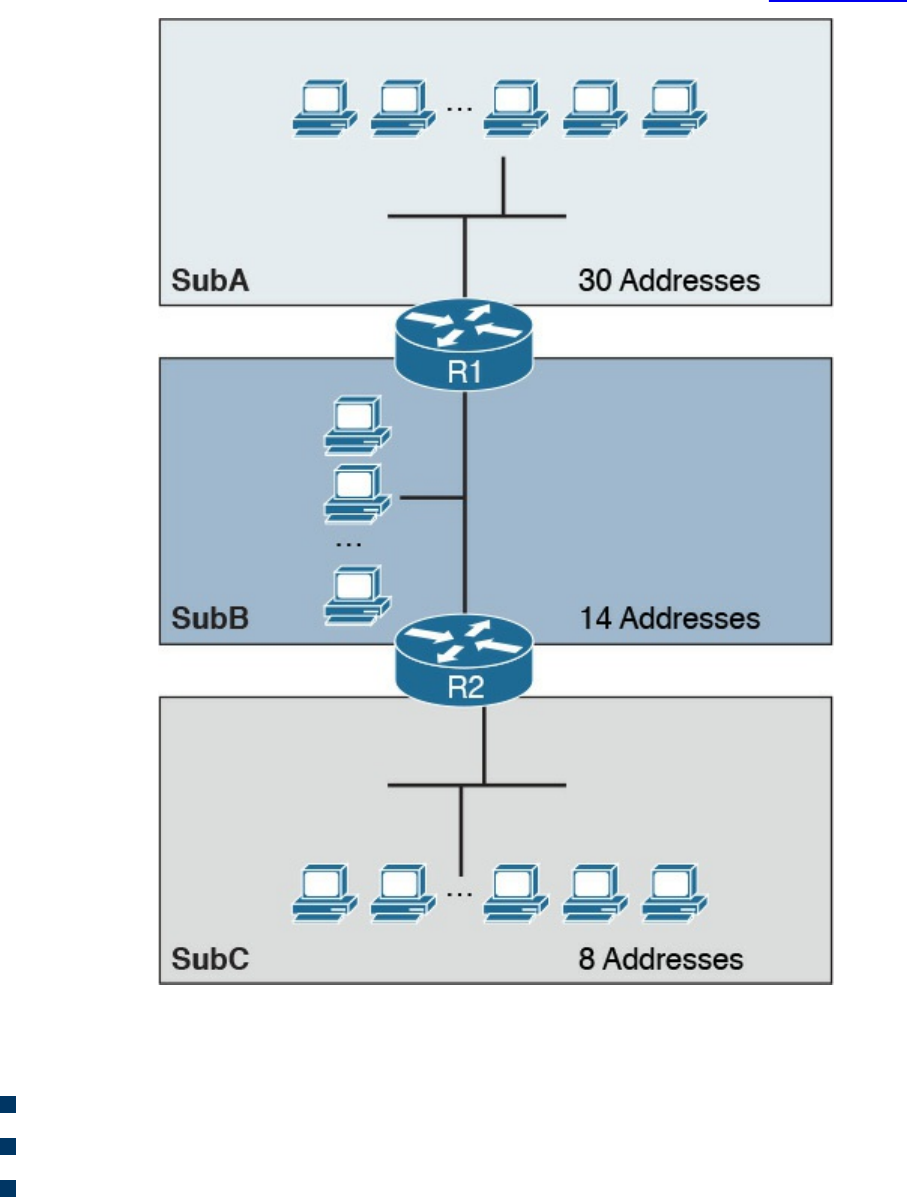

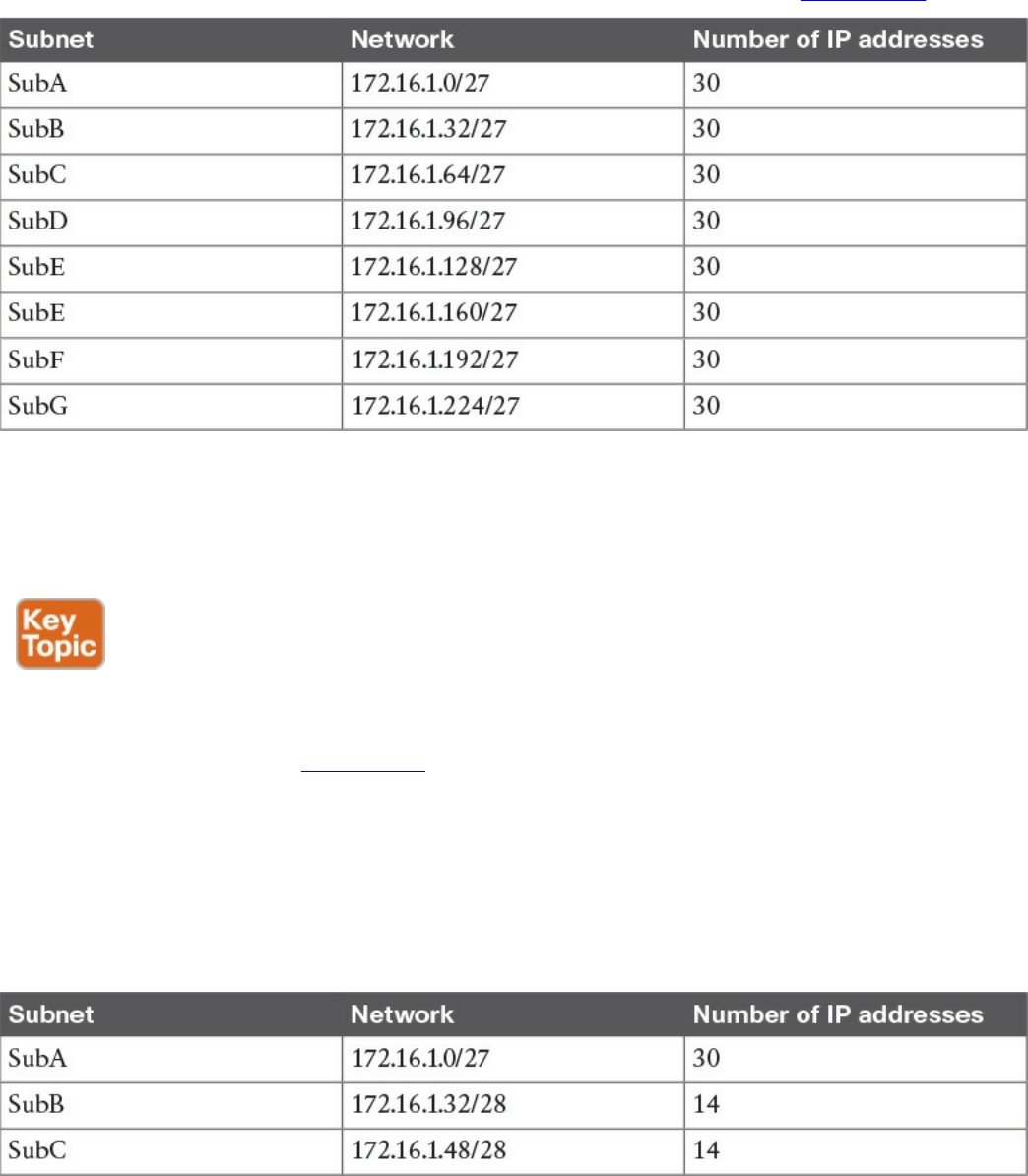

IP Network Subnetting and Classless Interdomain Routing (CIDR)

Variable-Length Subnet Mask (VLSM)

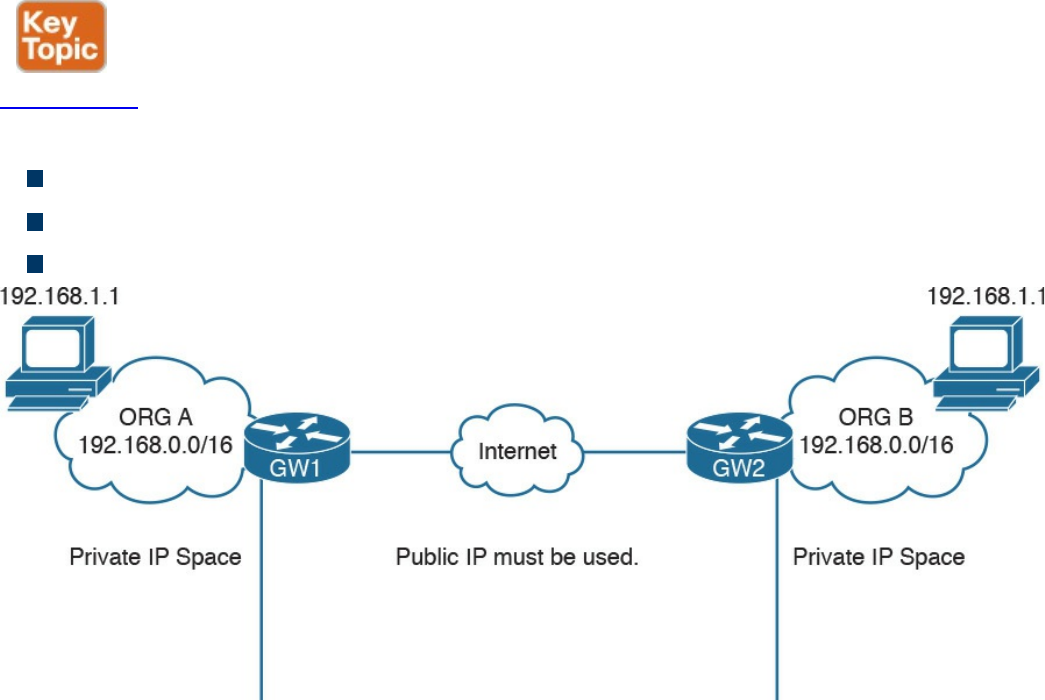

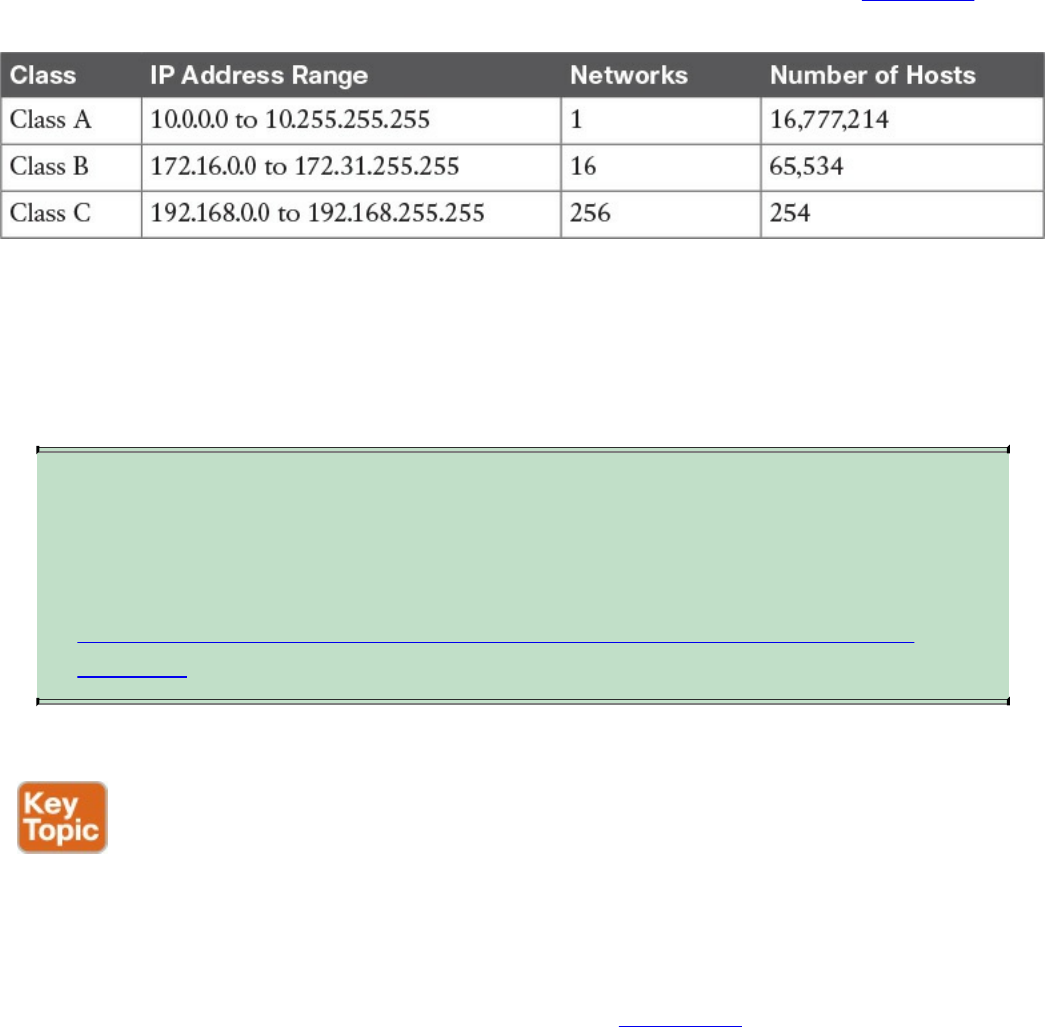

Public and Private IP Addresses

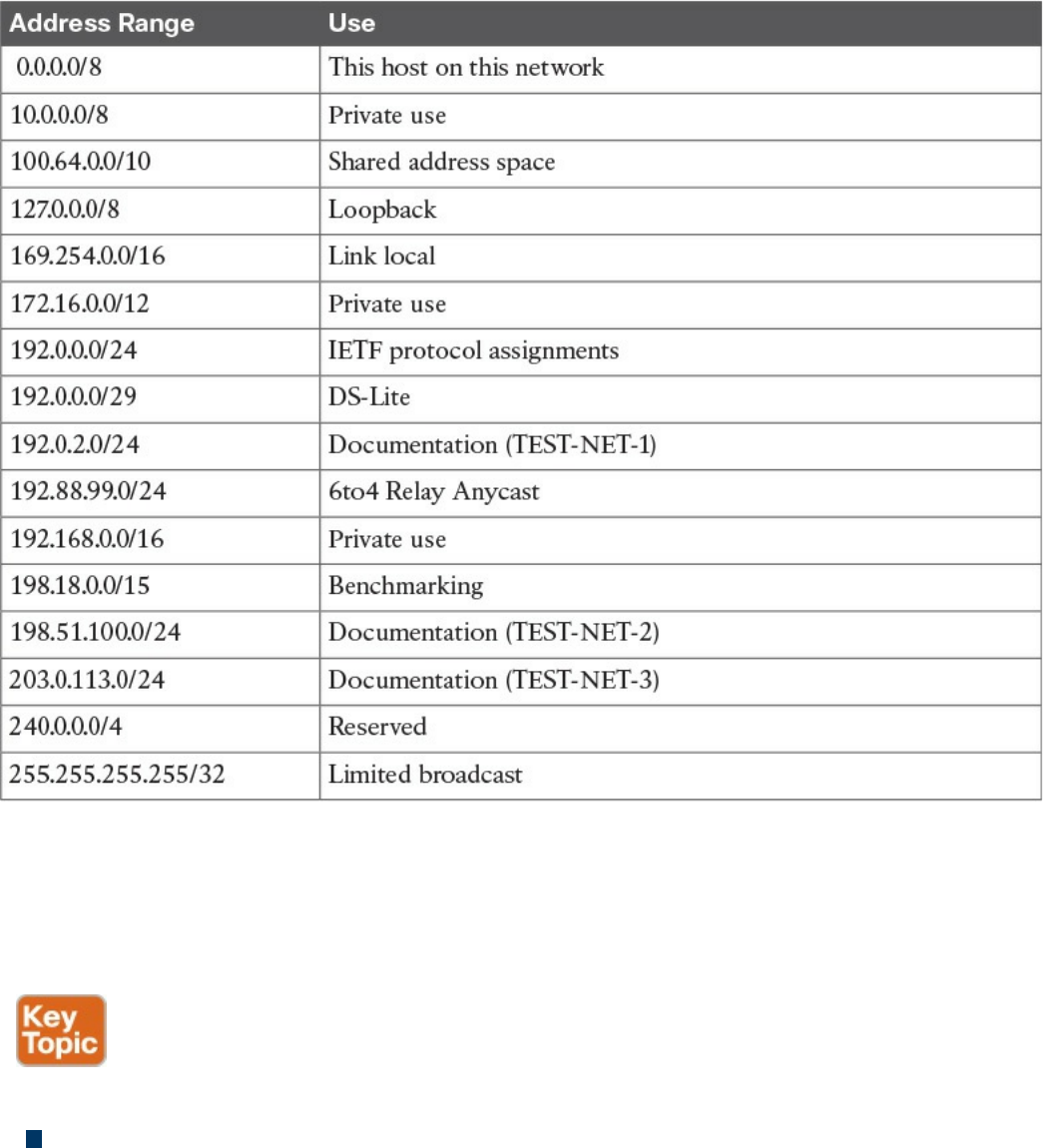

Special and Reserved IPv4 Addresses

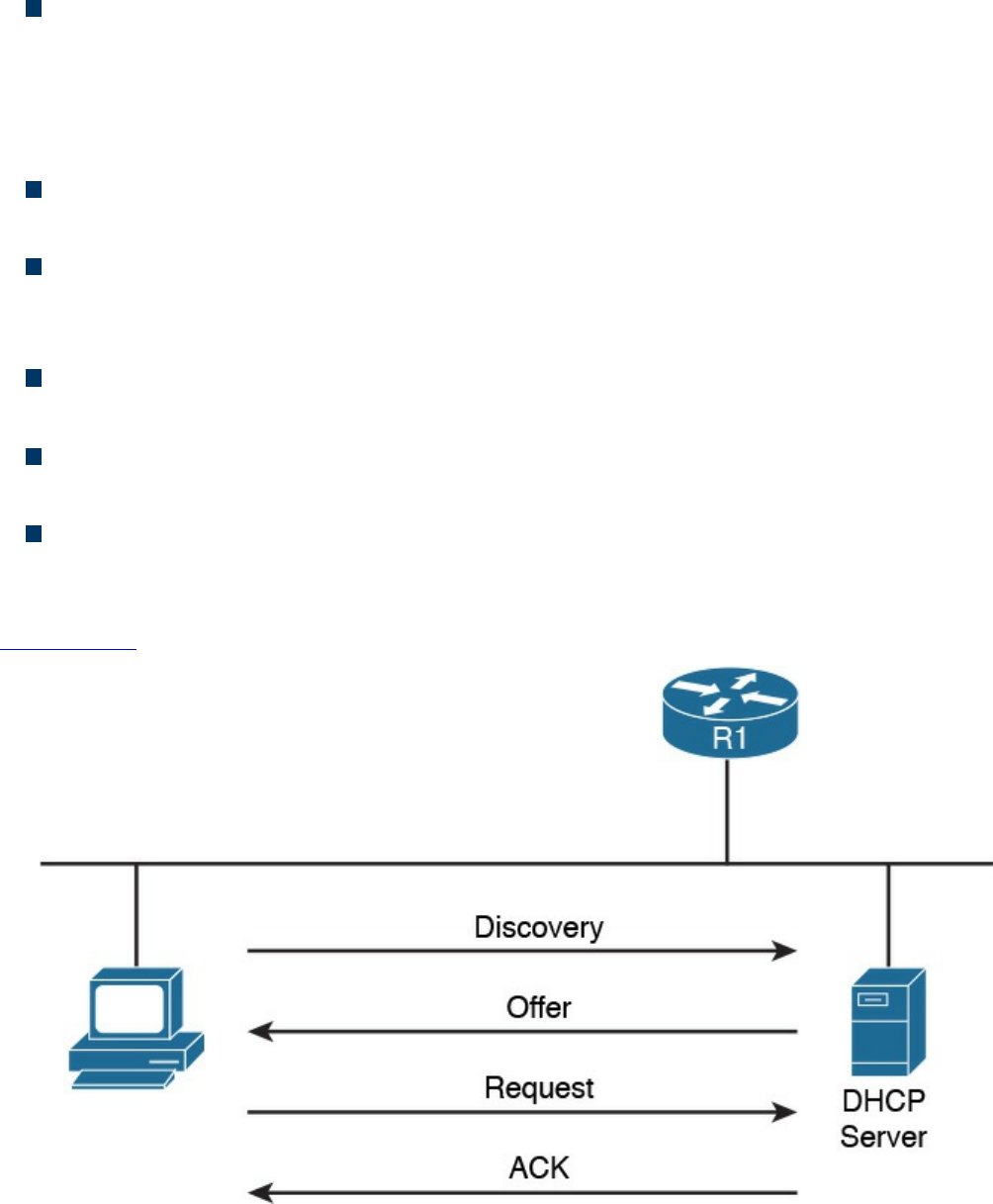

IP Addresses Assignment and DHCP

IP Communication Within a Subnet and Address Resolution Protocol (ARP)

Intersubnet IP Packet Routing

Routing Tables and IP Routing Protocols

Distance Vector

Advanced Distance Vector or Hybrid

Link-State

Using Multiple Routing Protocols

Internet Control Message Protocol (ICMP)

Domain Name System (DNS)

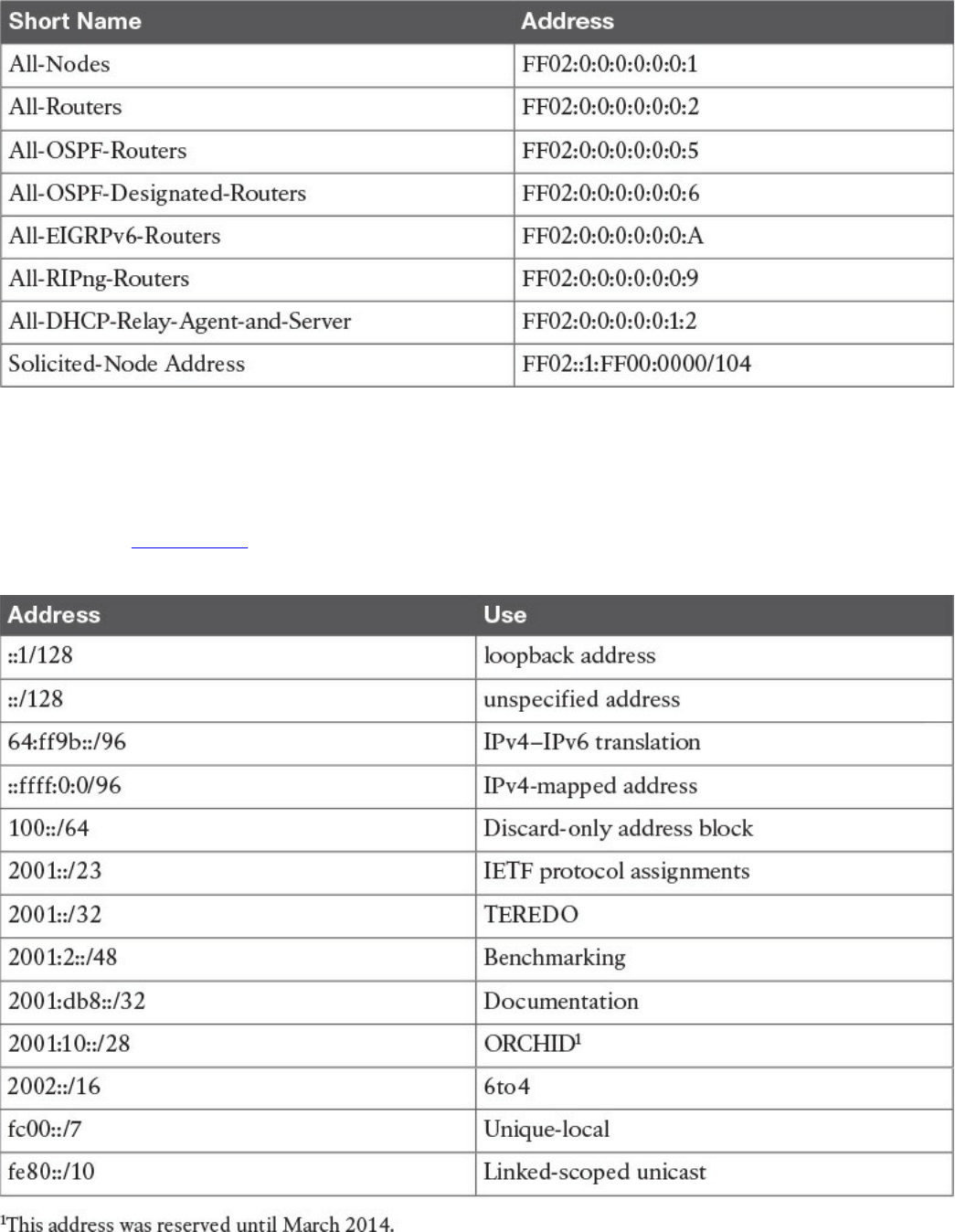

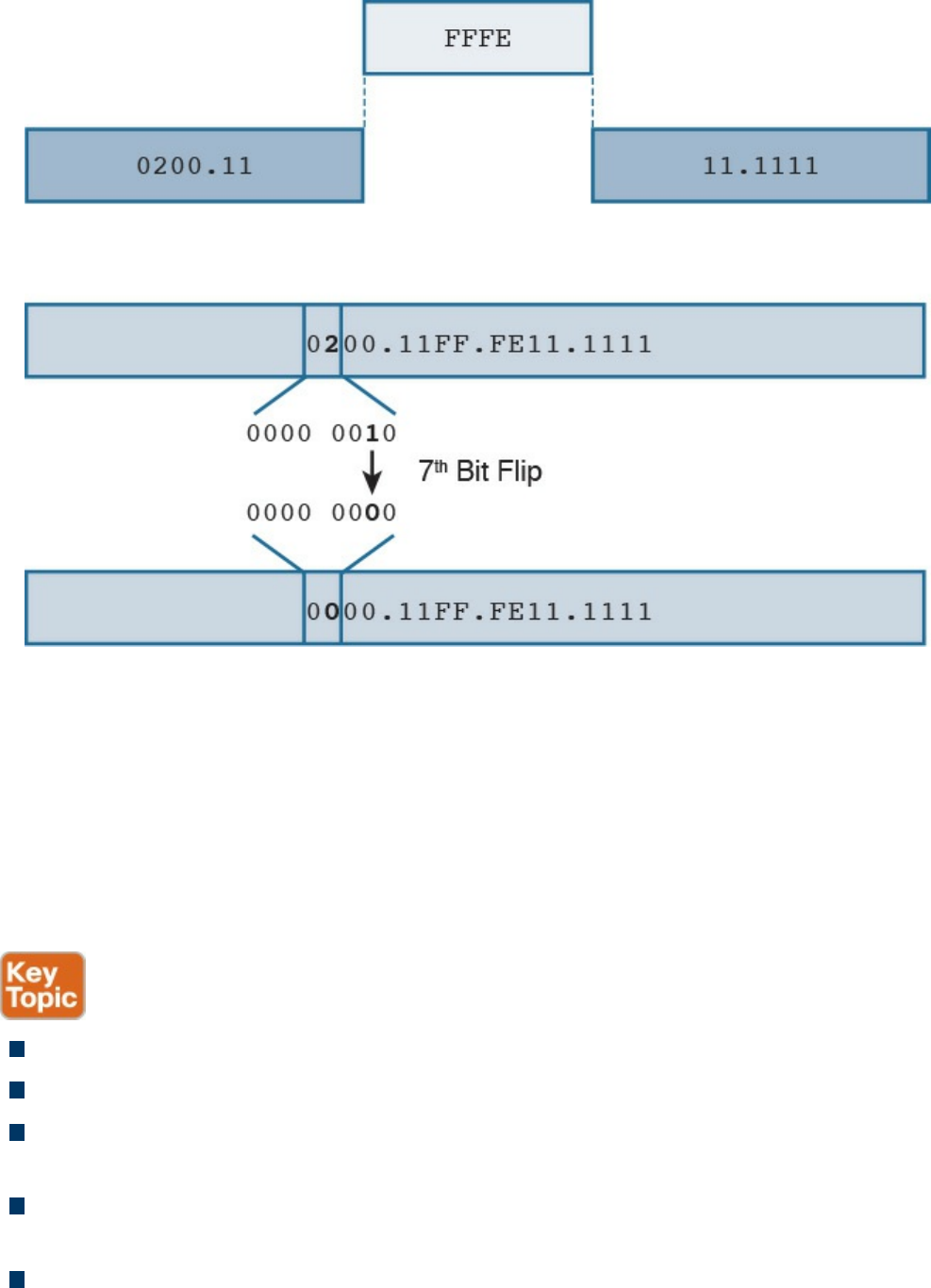

IPv6 Fundamentals

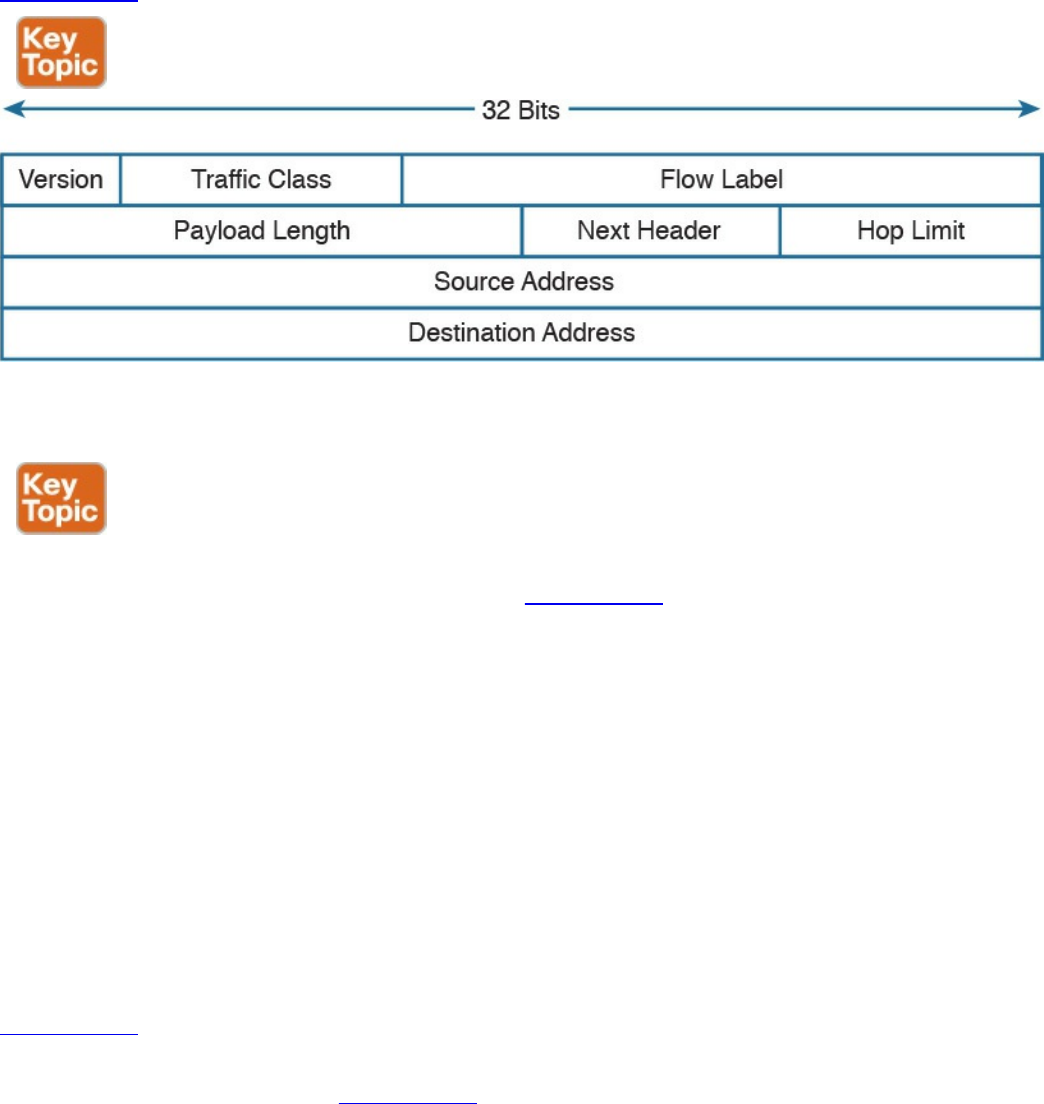

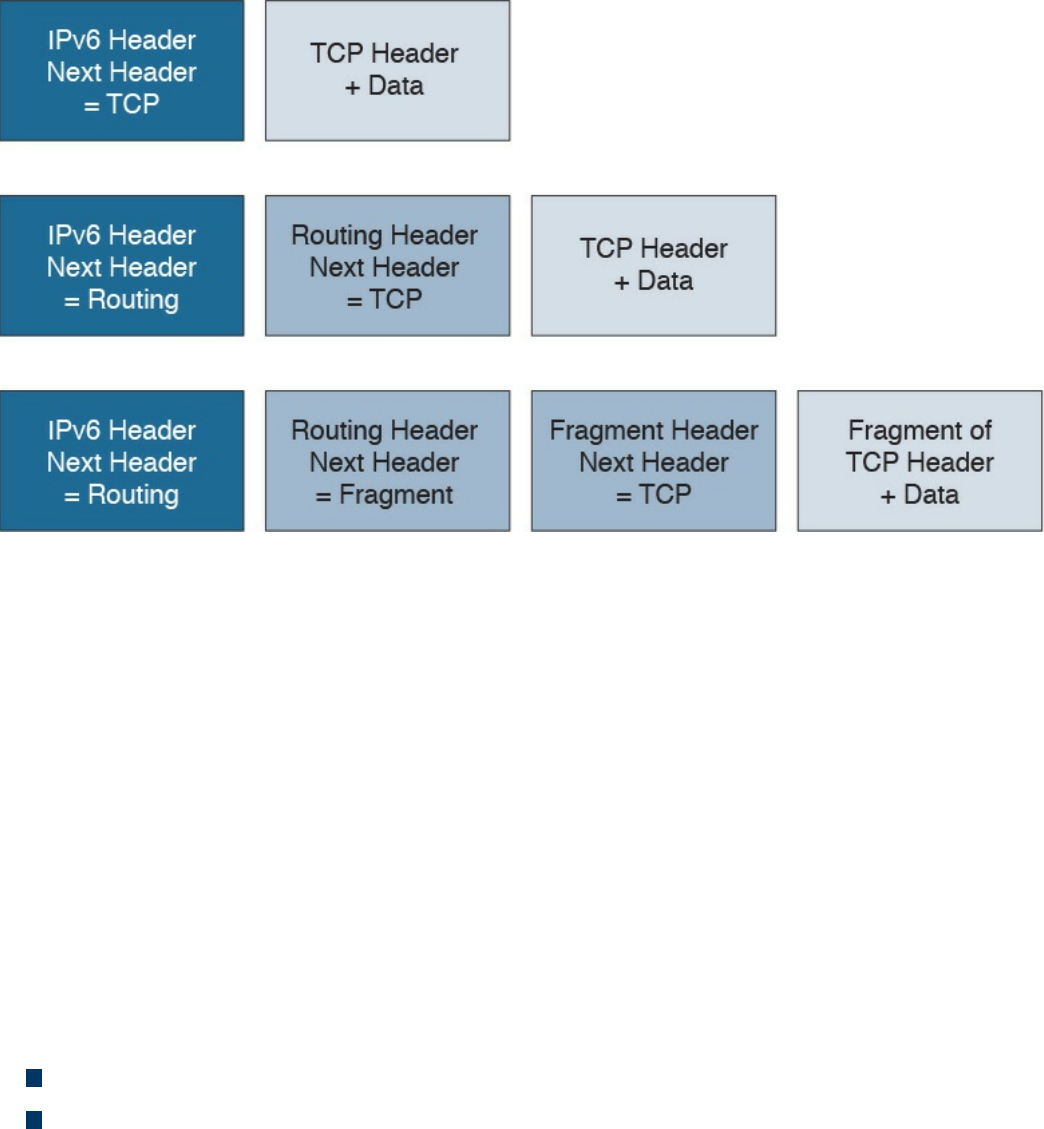

IPv6 Header

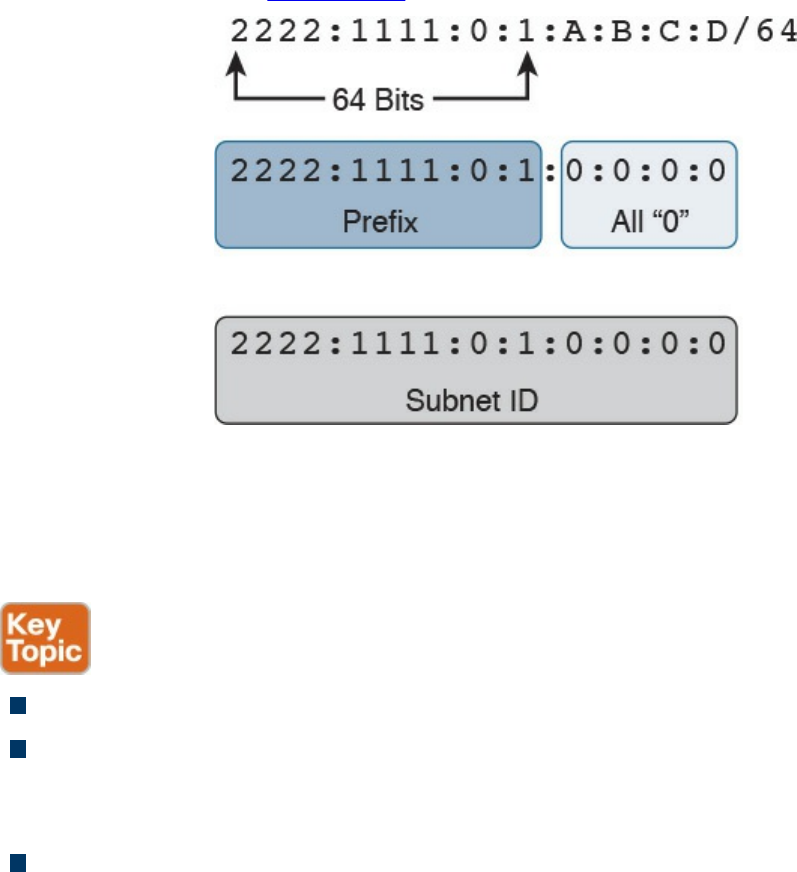

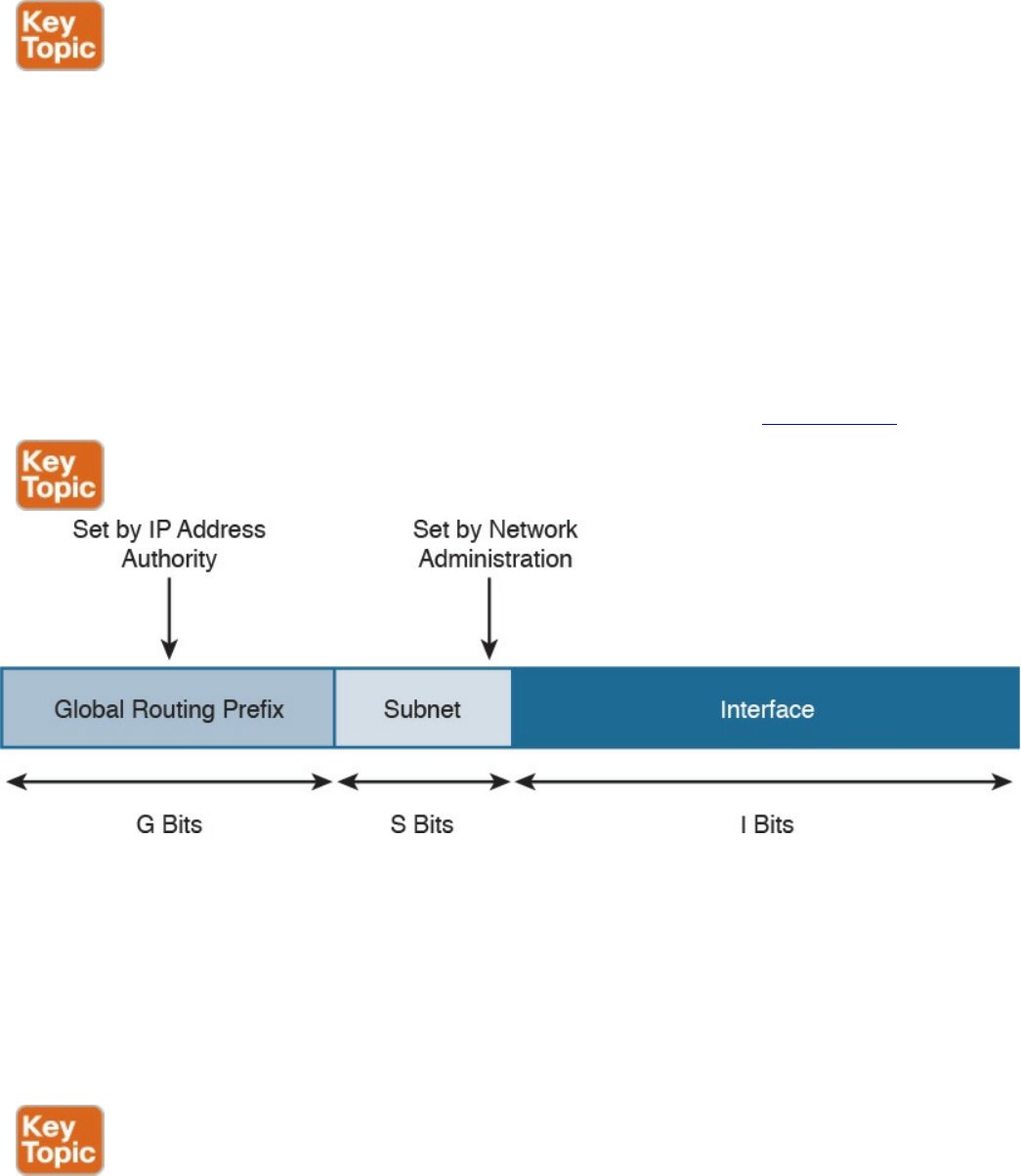

IPv6 Addressing and Subnets

Special and Reserved IPv6 Addresses

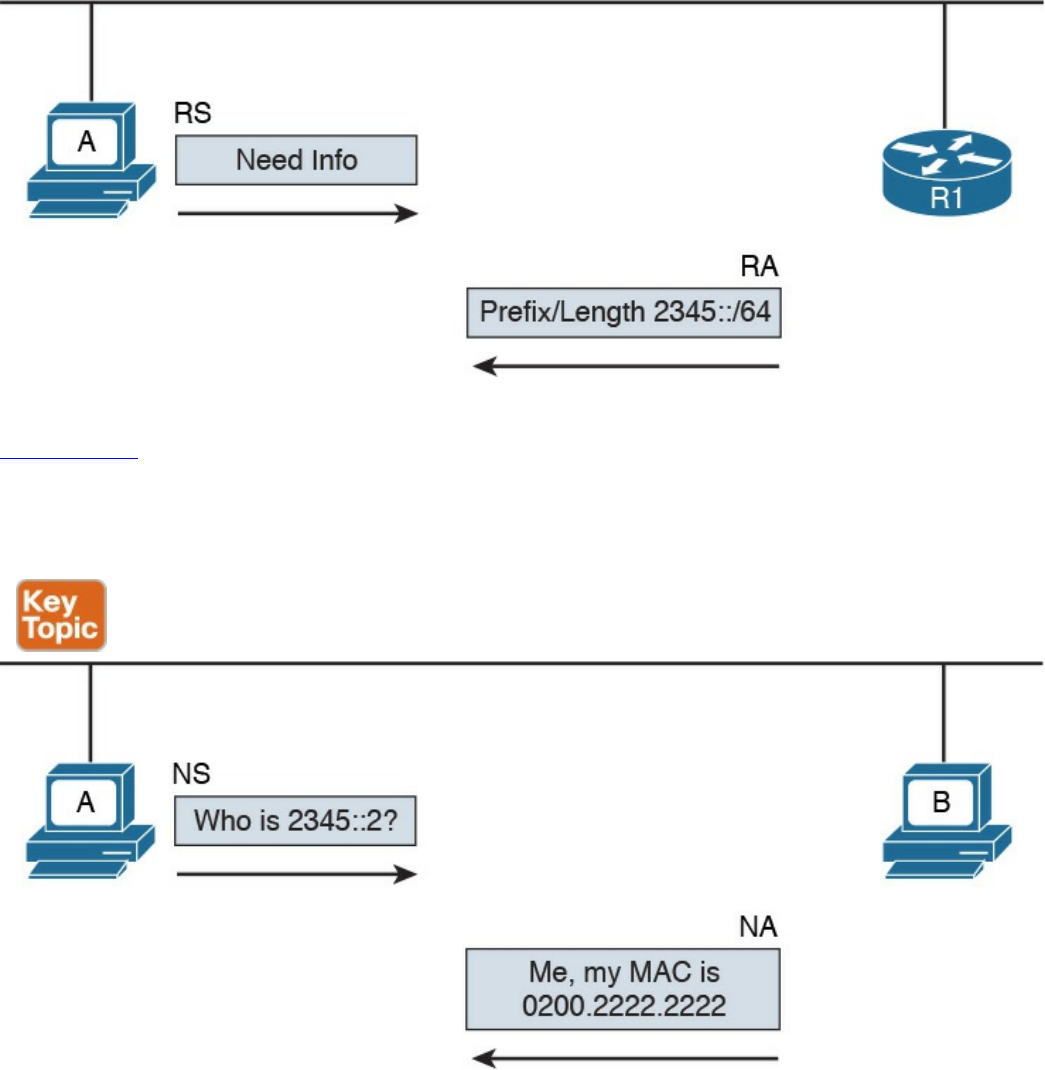

IPv6 Addresses Assignment, Neighbor Discovery Protocol, and DHCPv6

Transport Layer Technologies and Protocols

Transmission Control Protocol (TCP)

TCP Header

TCP Connection Establishment and Termination

TCP Socket

TCP Error Detection and Recovery

TCP Flow Control

User Datagram Protocol (UDP)

UDP Header

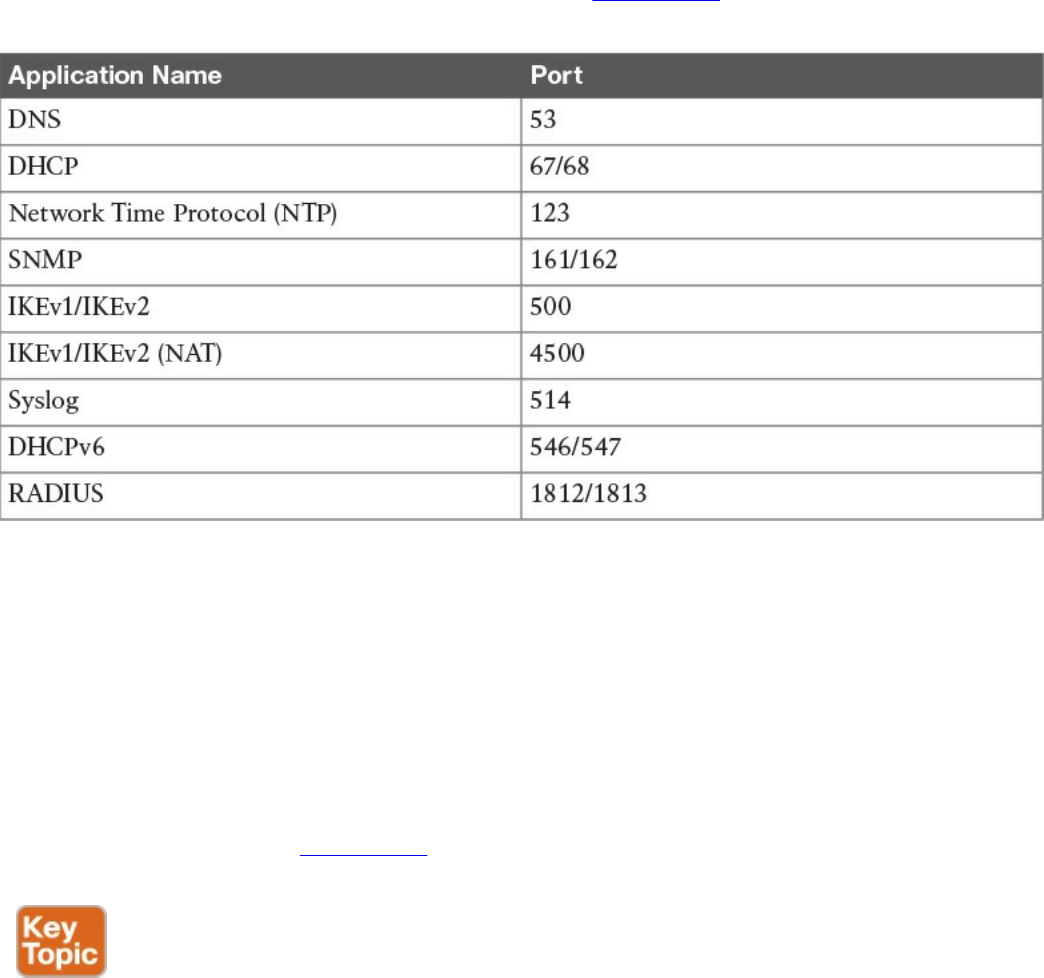

UDP Socket and Known UDP Application

Exam Preparation Tasks

www.hellodigi.ir

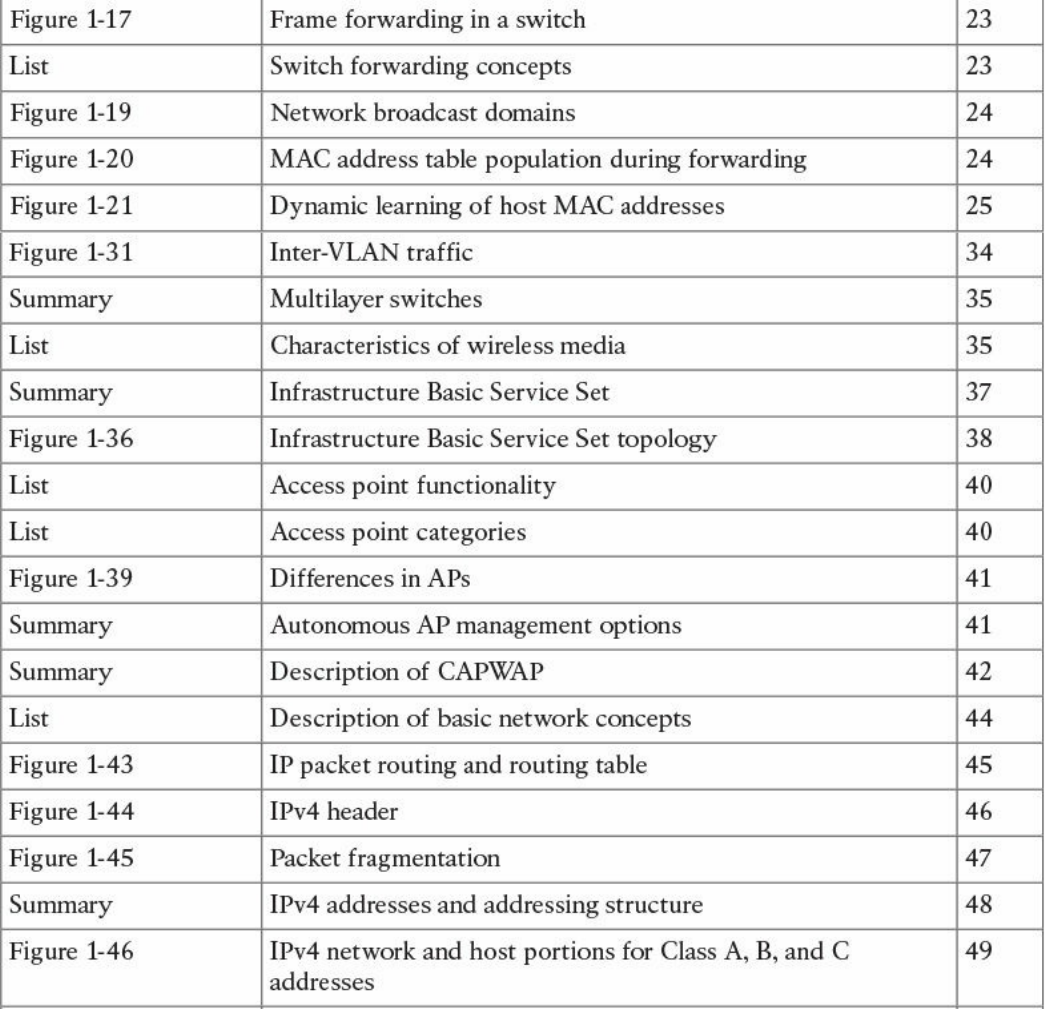

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

References and Further Reading

Chapter 2 Network Security Devices and Cloud Services

“Do I Know This Already?” Quiz

Foundation Topics

Network Security Systems

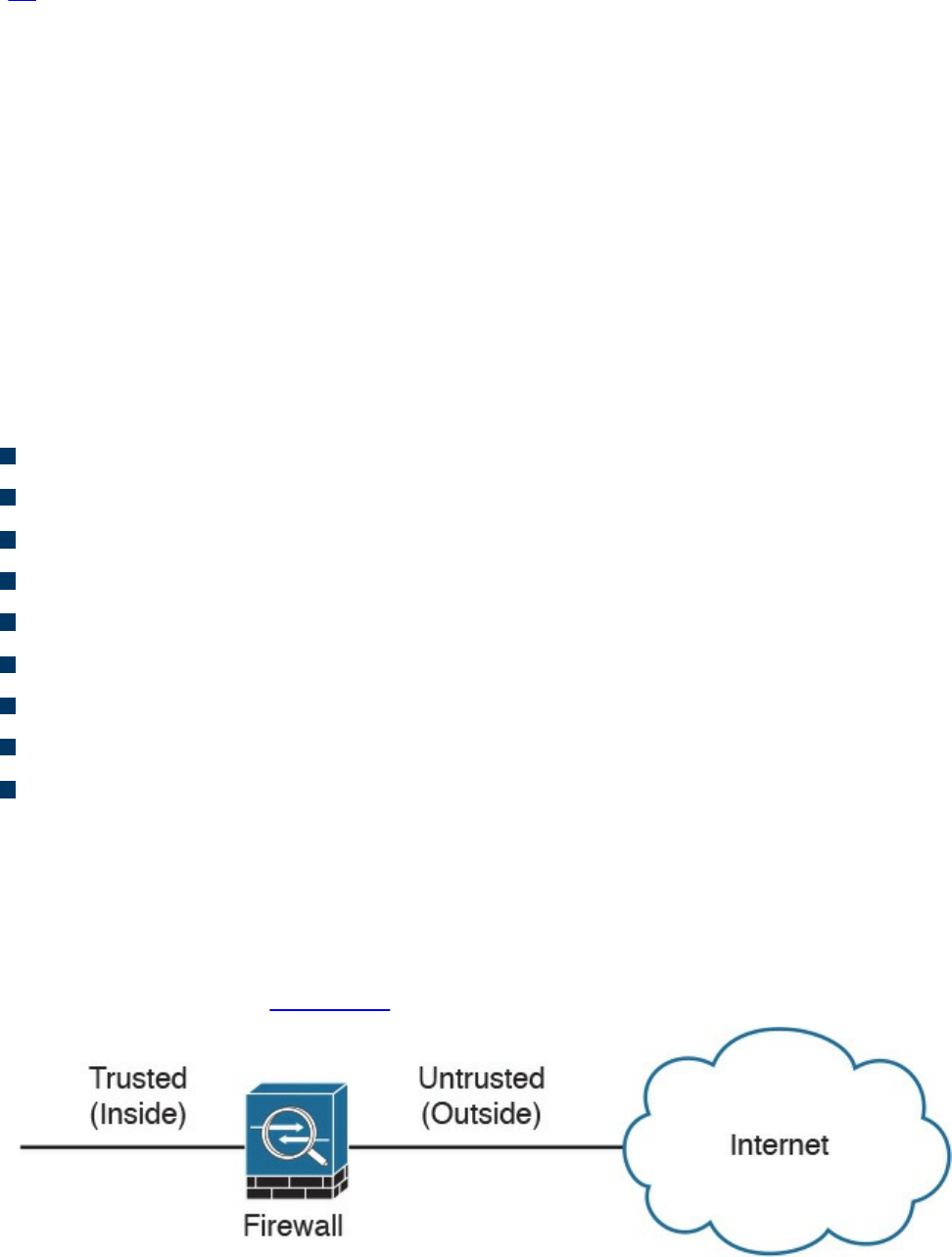

Traditional Firewalls

Packet-Filtering Techniques

Application Proxies

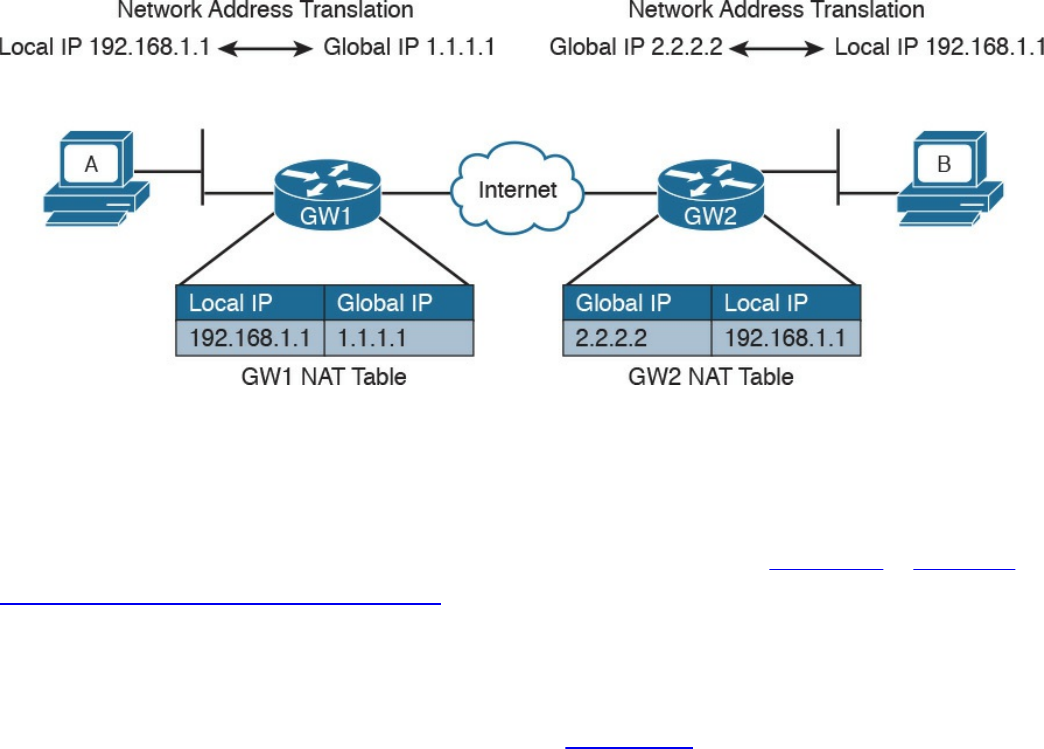

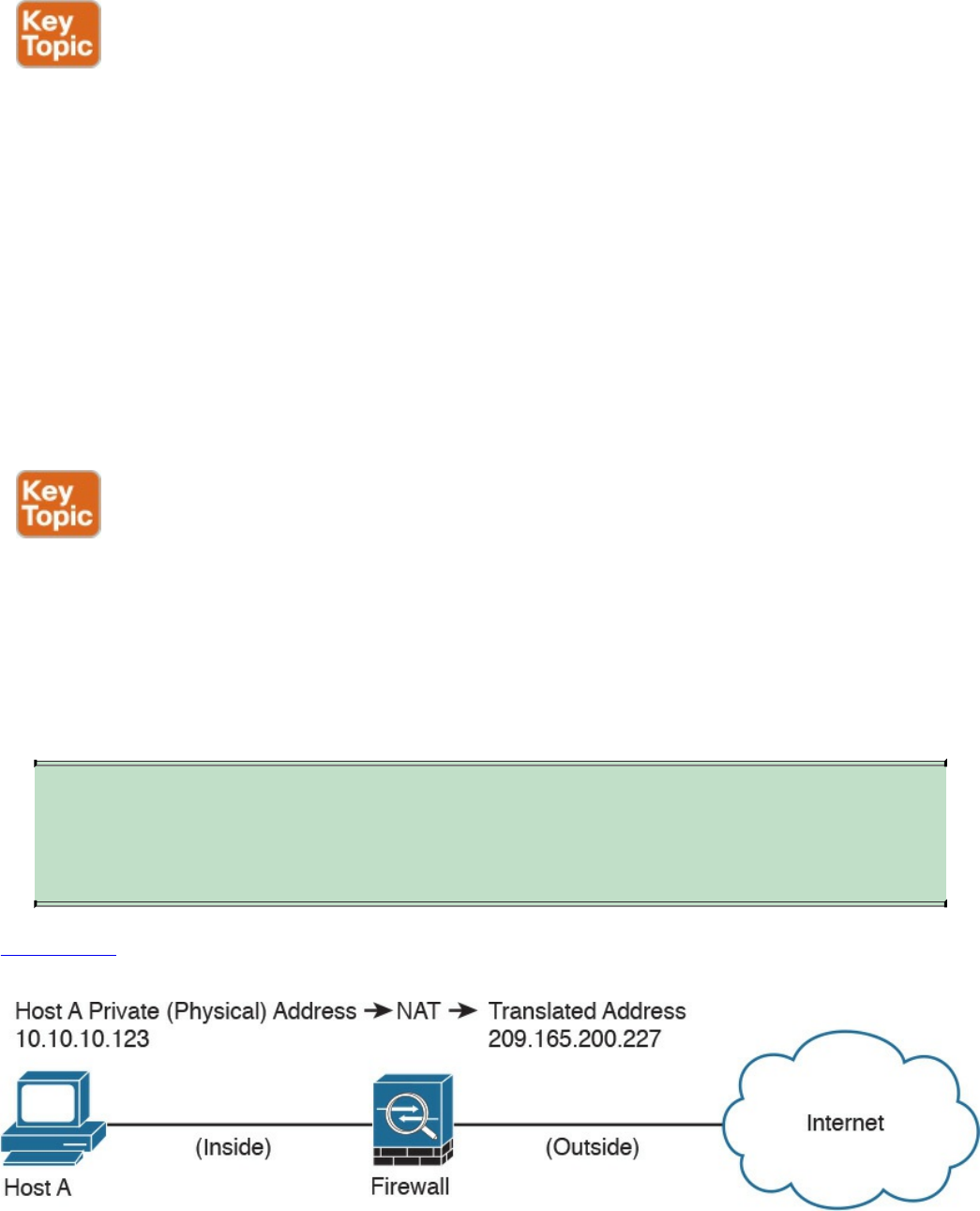

Network Address Translation

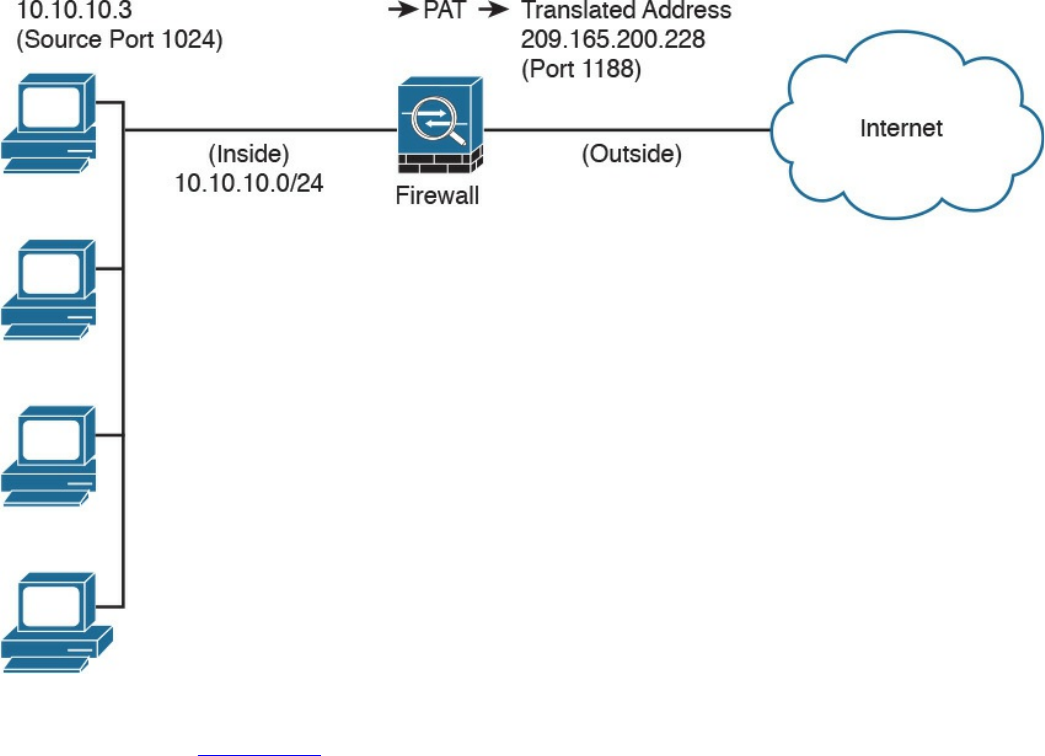

Port Address Translation

Static Translation

Stateful Inspection Firewalls

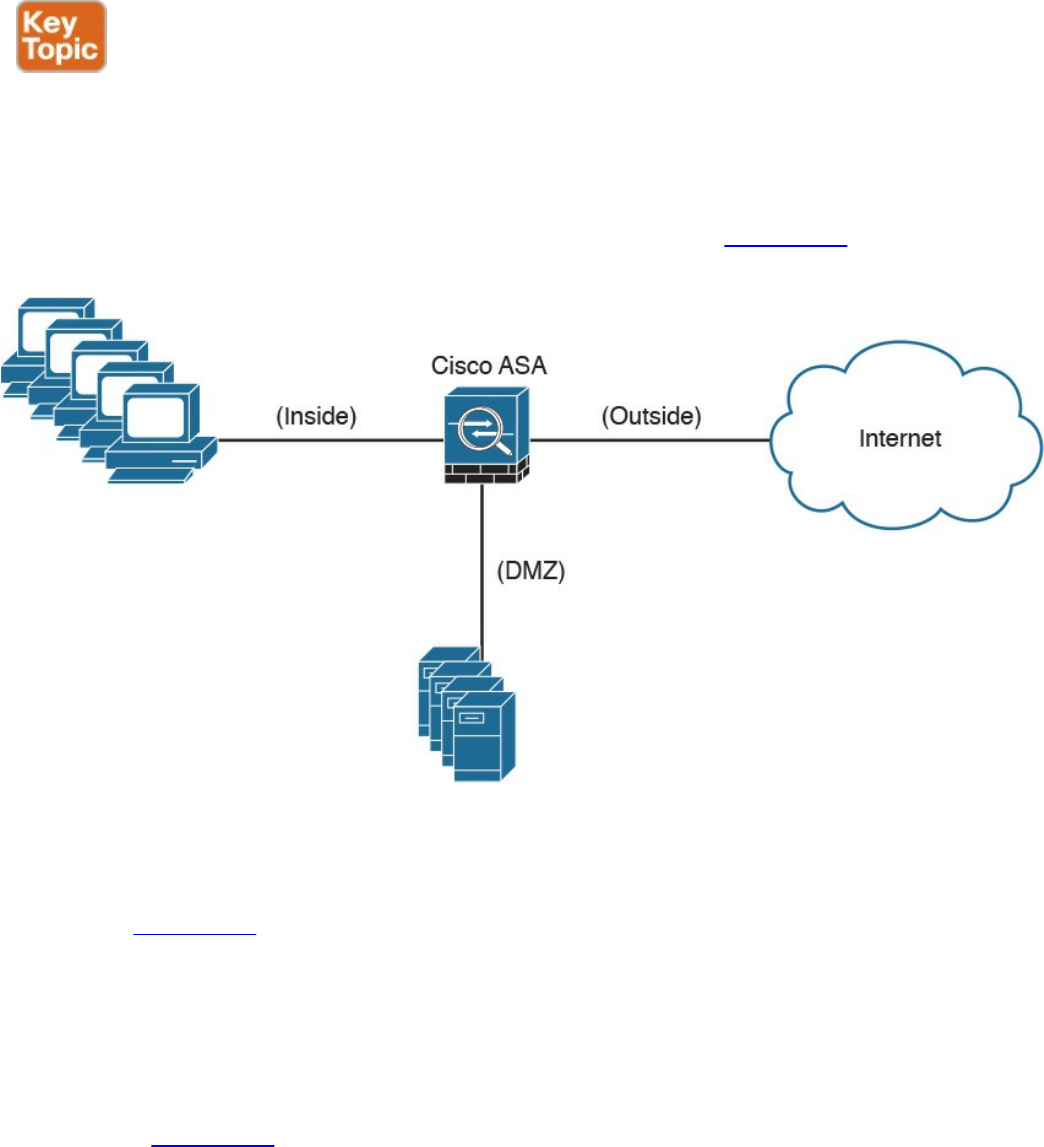

Demilitarized Zones

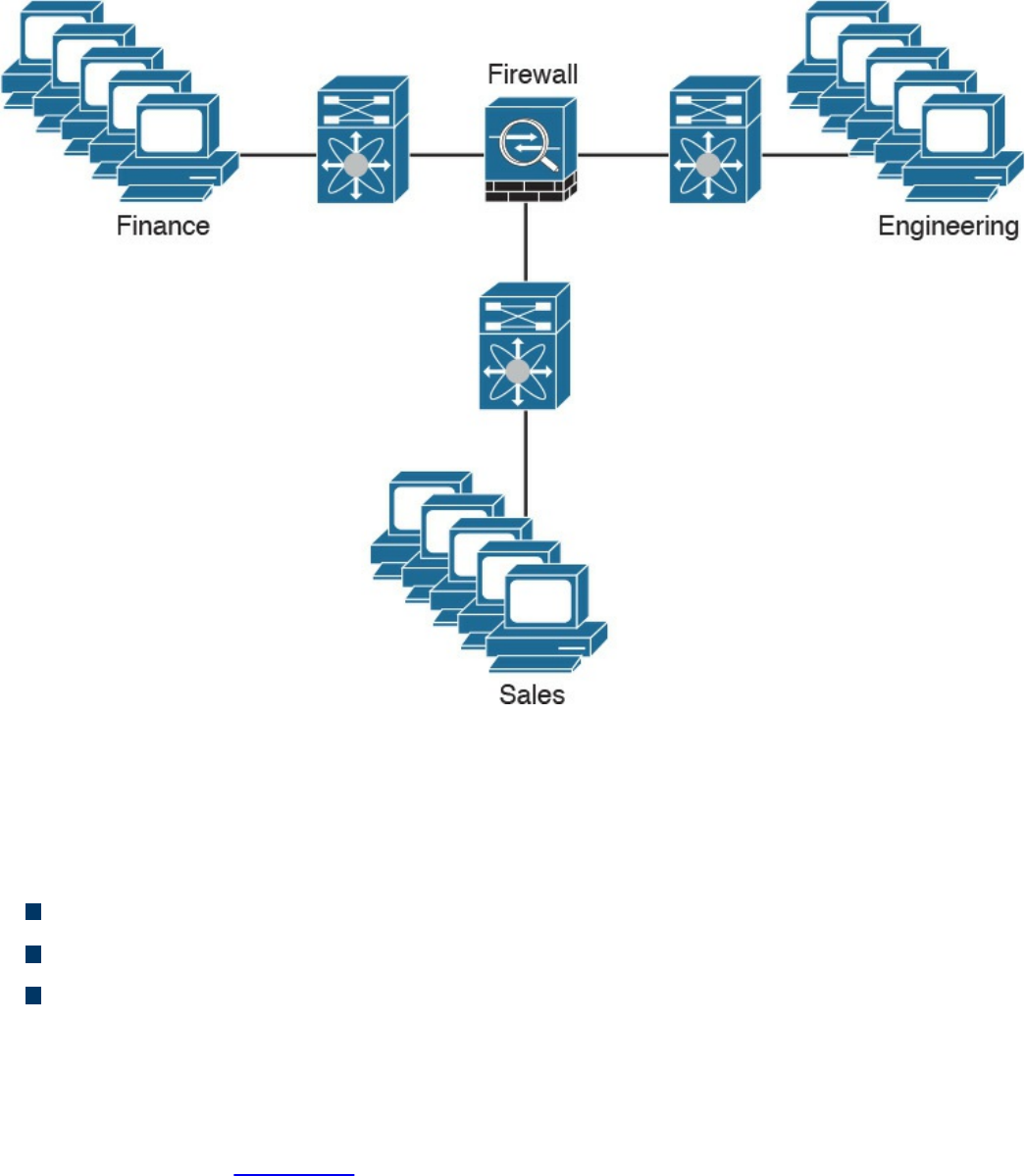

Firewalls Provide Network Segmentation

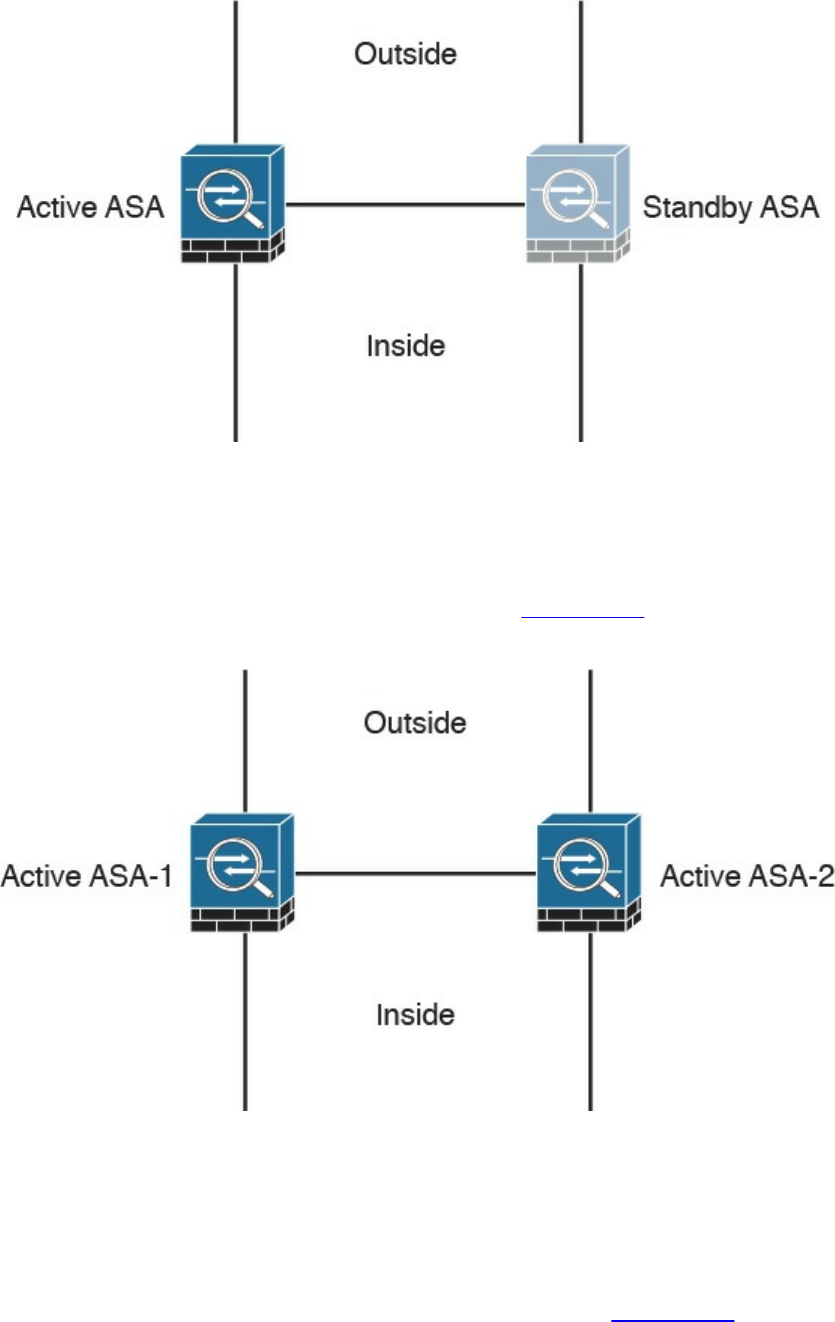

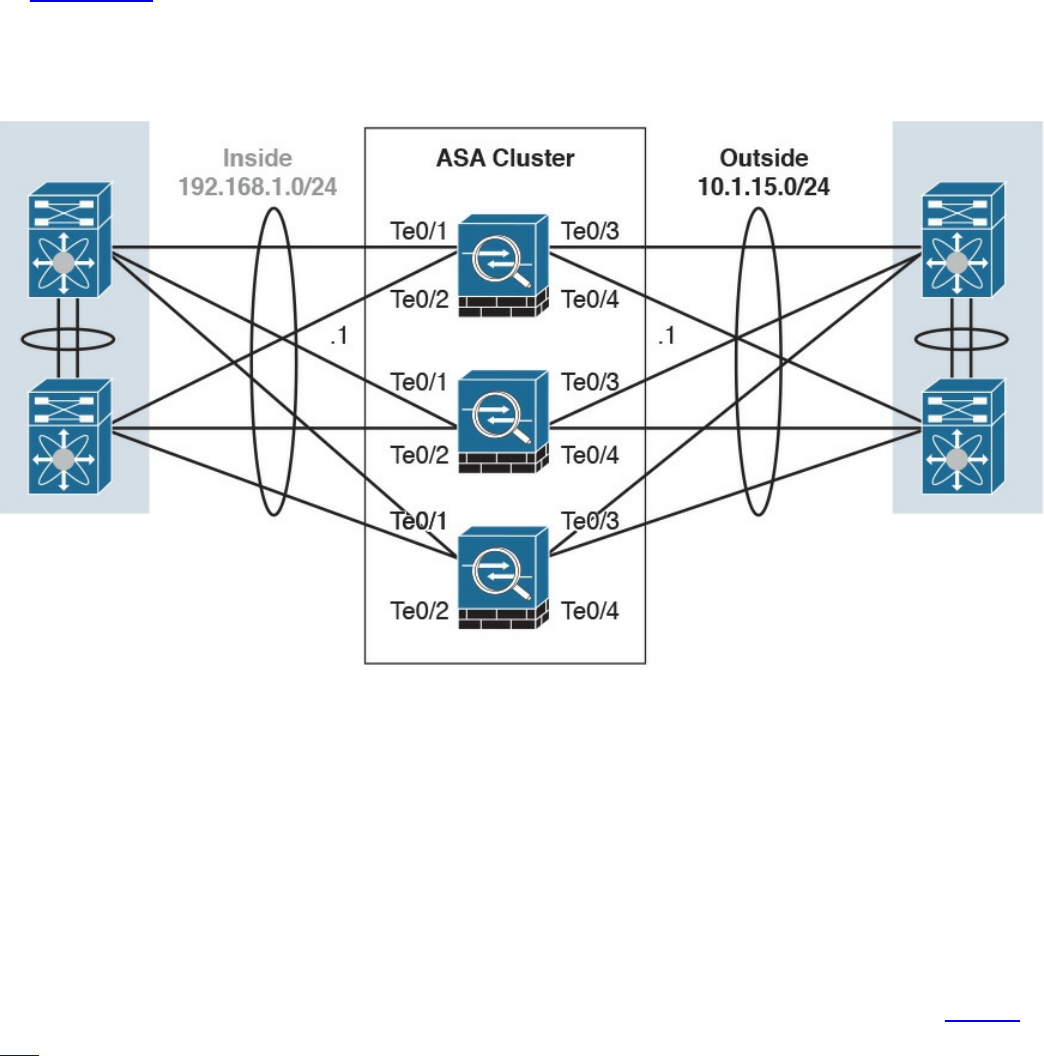

High Availability

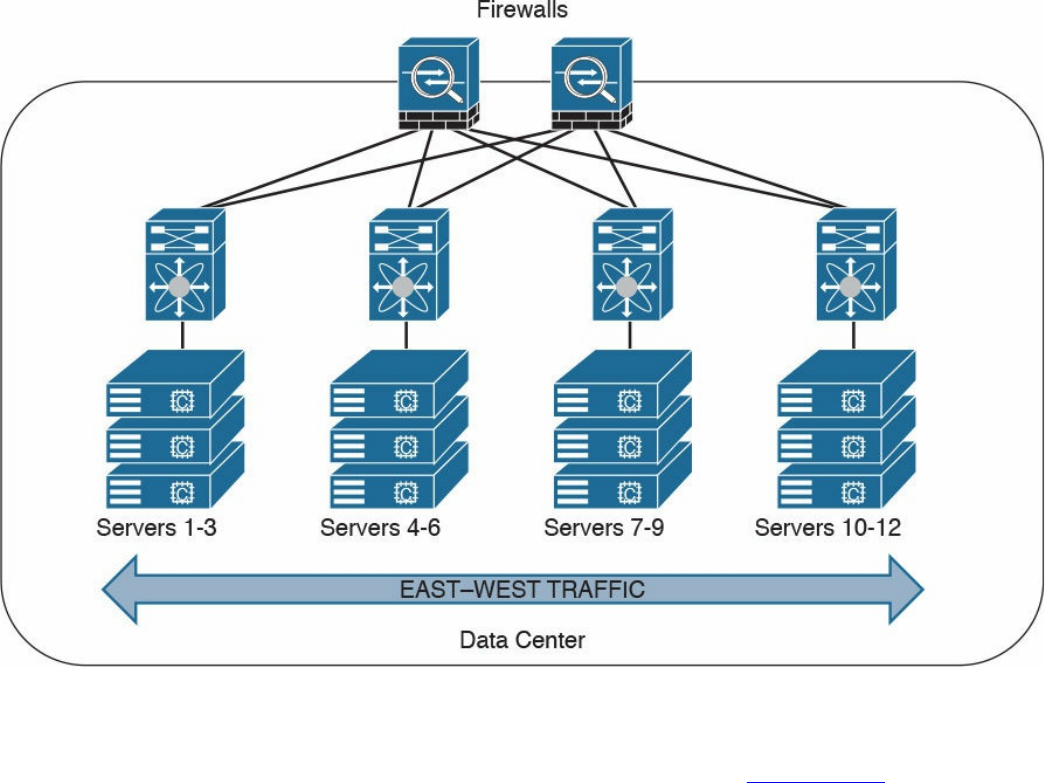

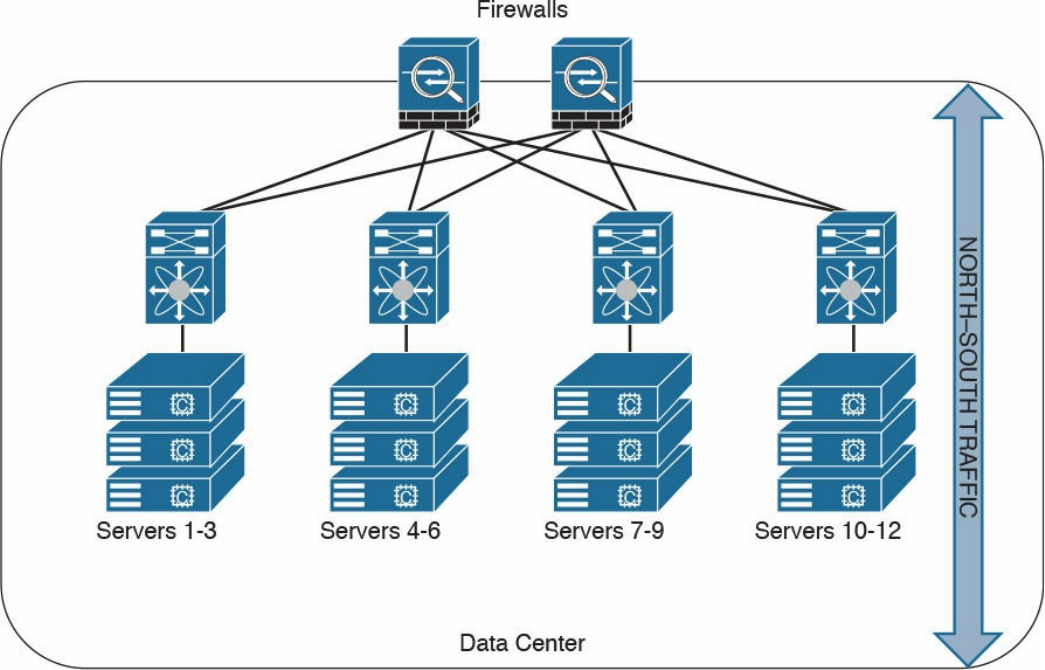

Firewalls in the Data Center

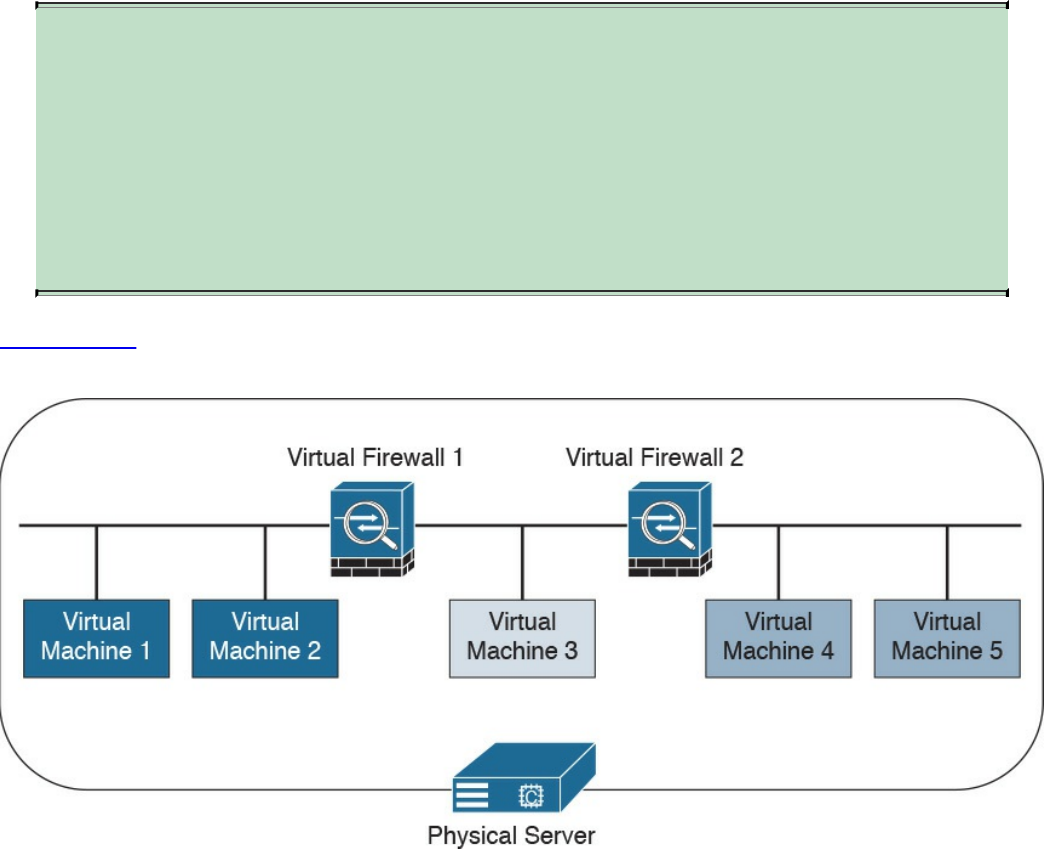

Virtual Firewalls

Deep Packet Inspection

Next-Generation Firewalls

Cisco Firepower Threat Defense

Personal Firewalls

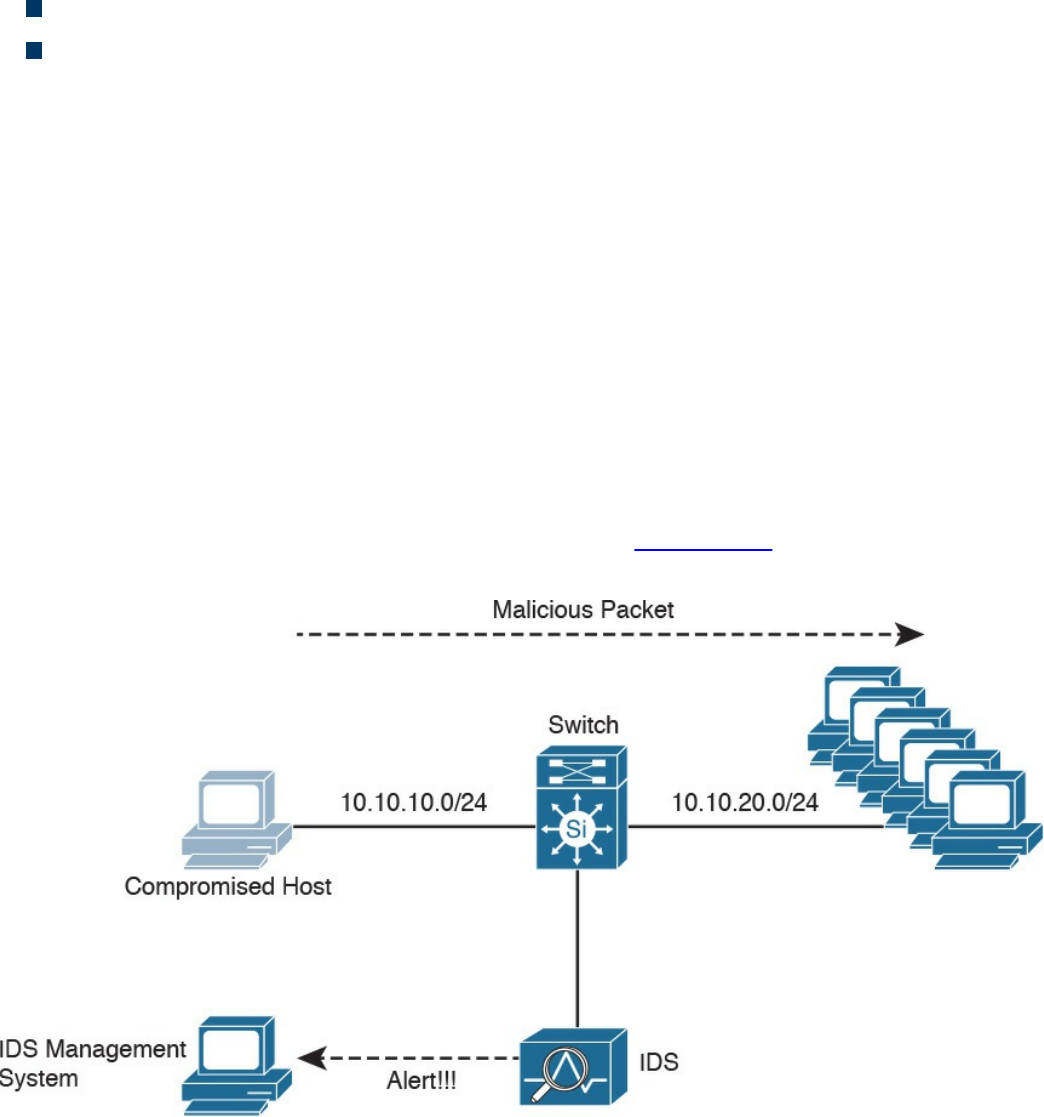

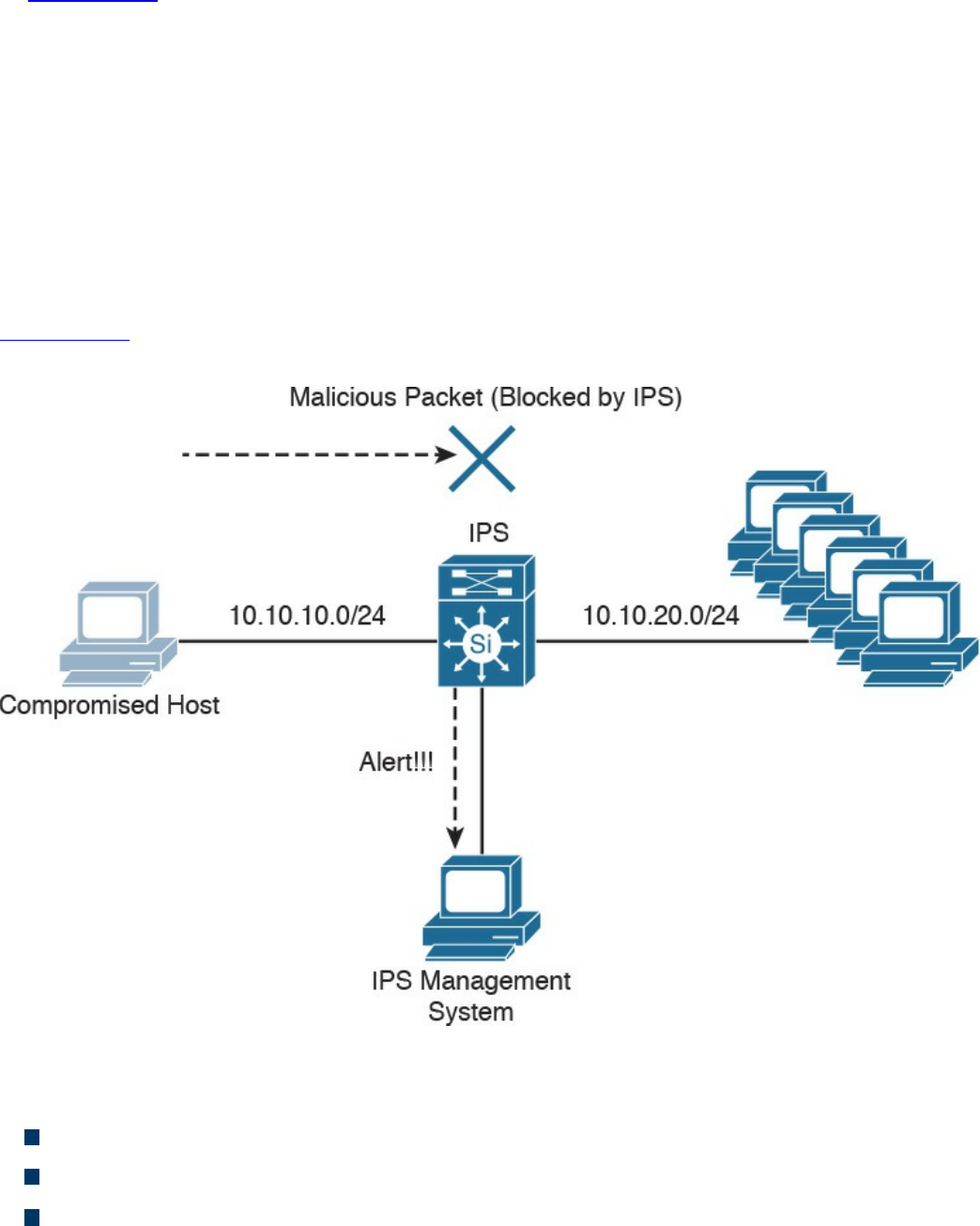

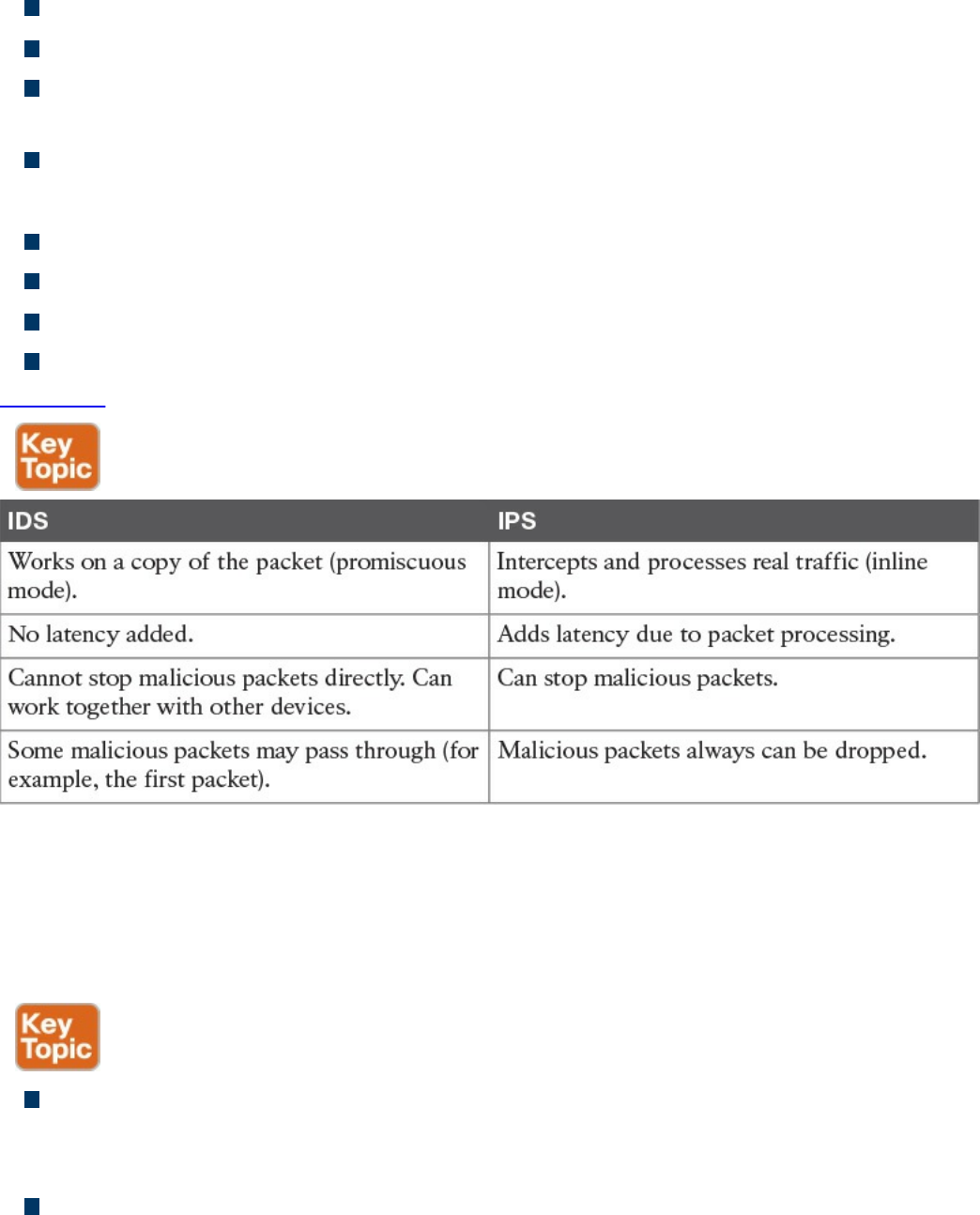

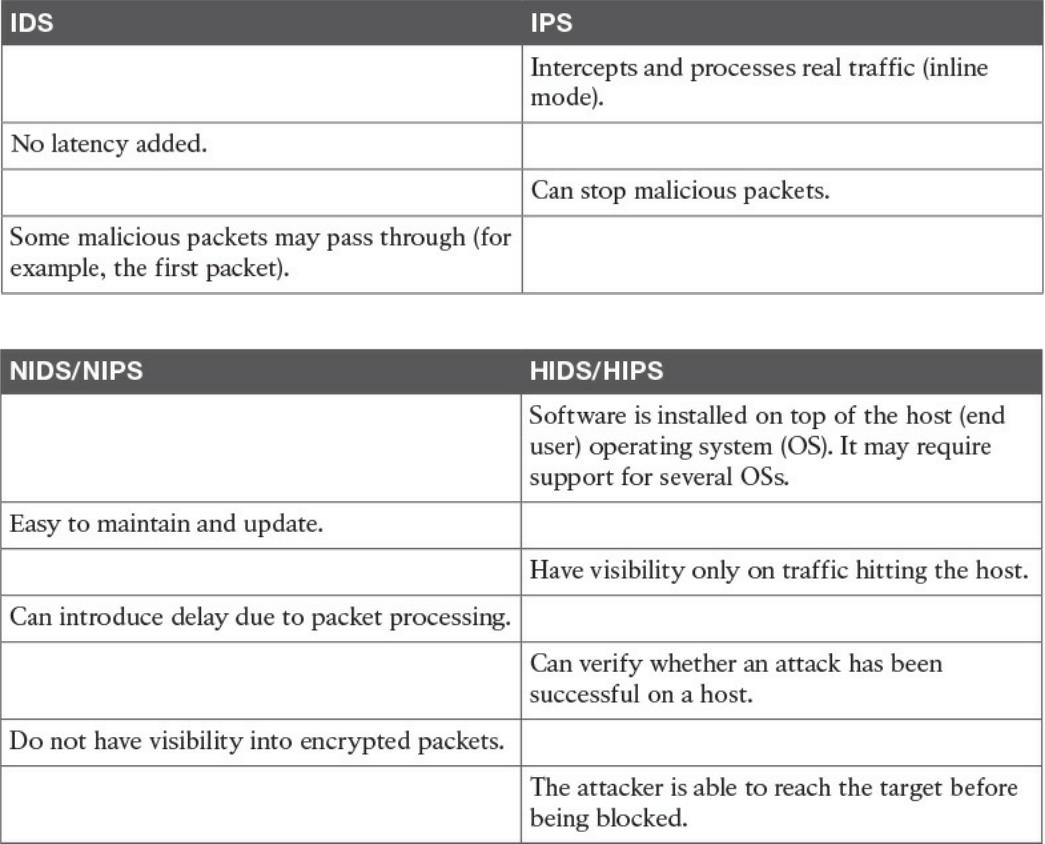

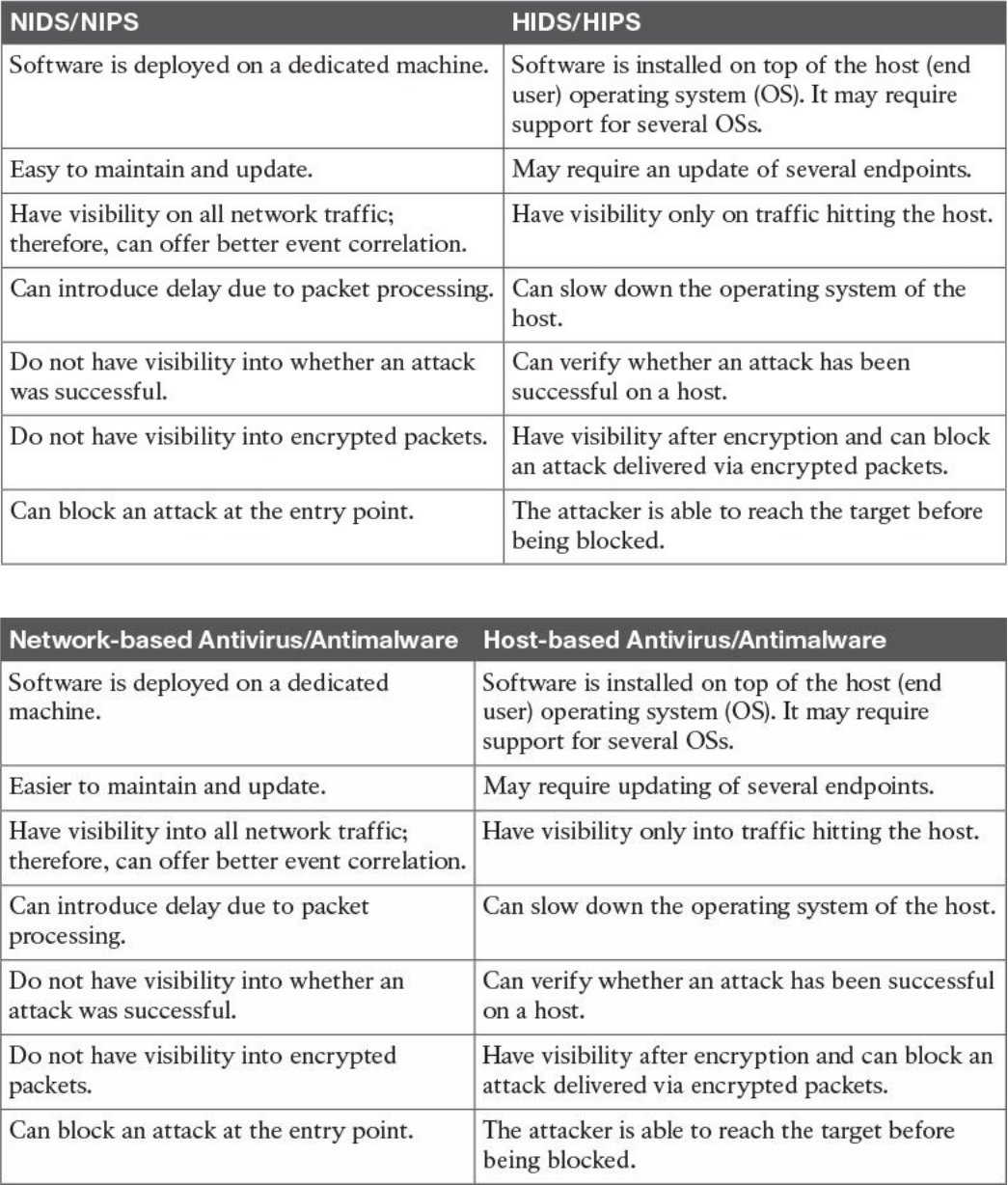

Intrusion Detection Systems and Intrusion Prevention Systems

Pattern Matching and Stateful Pattern-Matching Recognition

Protocol Analysis

Heuristic-Based Analysis

Anomaly-Based Analysis

Global Threat Correlation Capabilities

Next-Generation Intrusion Prevention Systems

www.hellodigi.ir

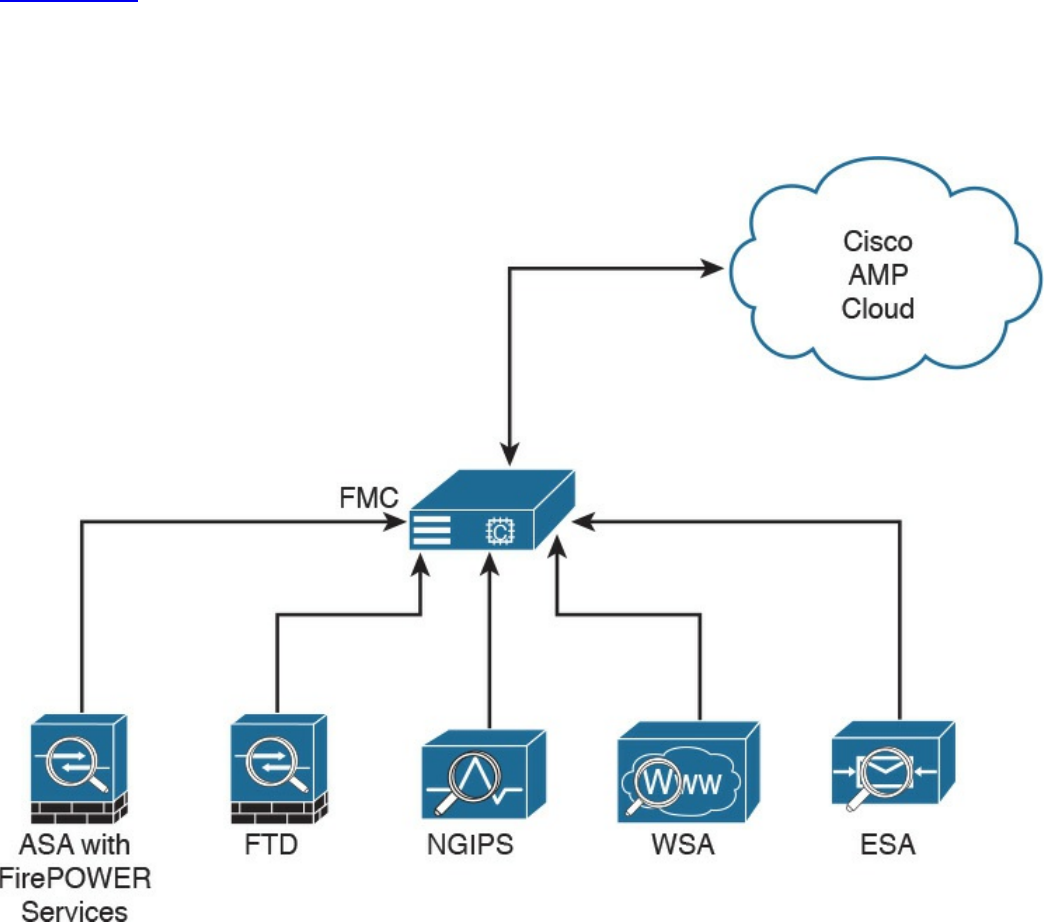

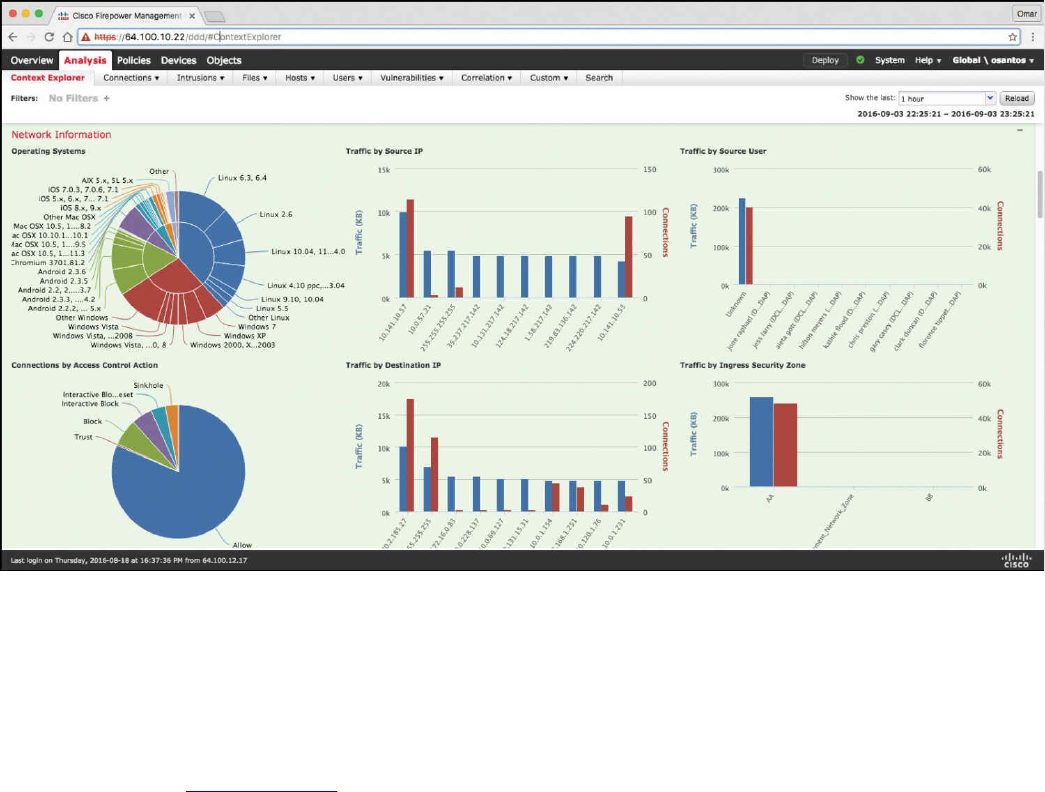

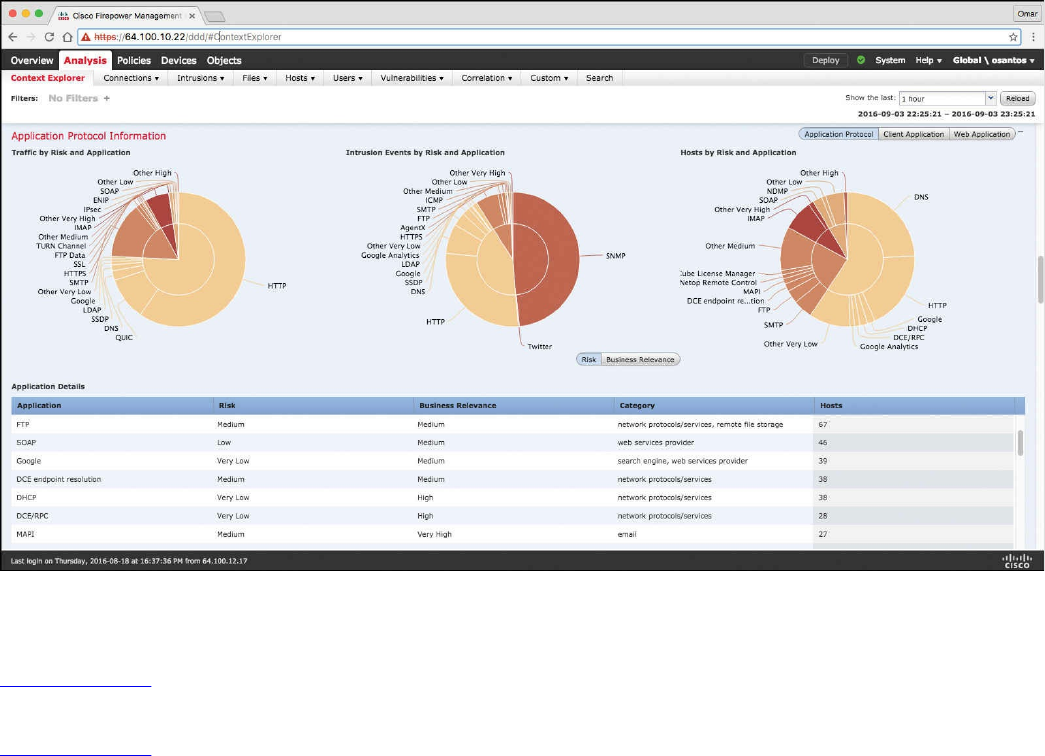

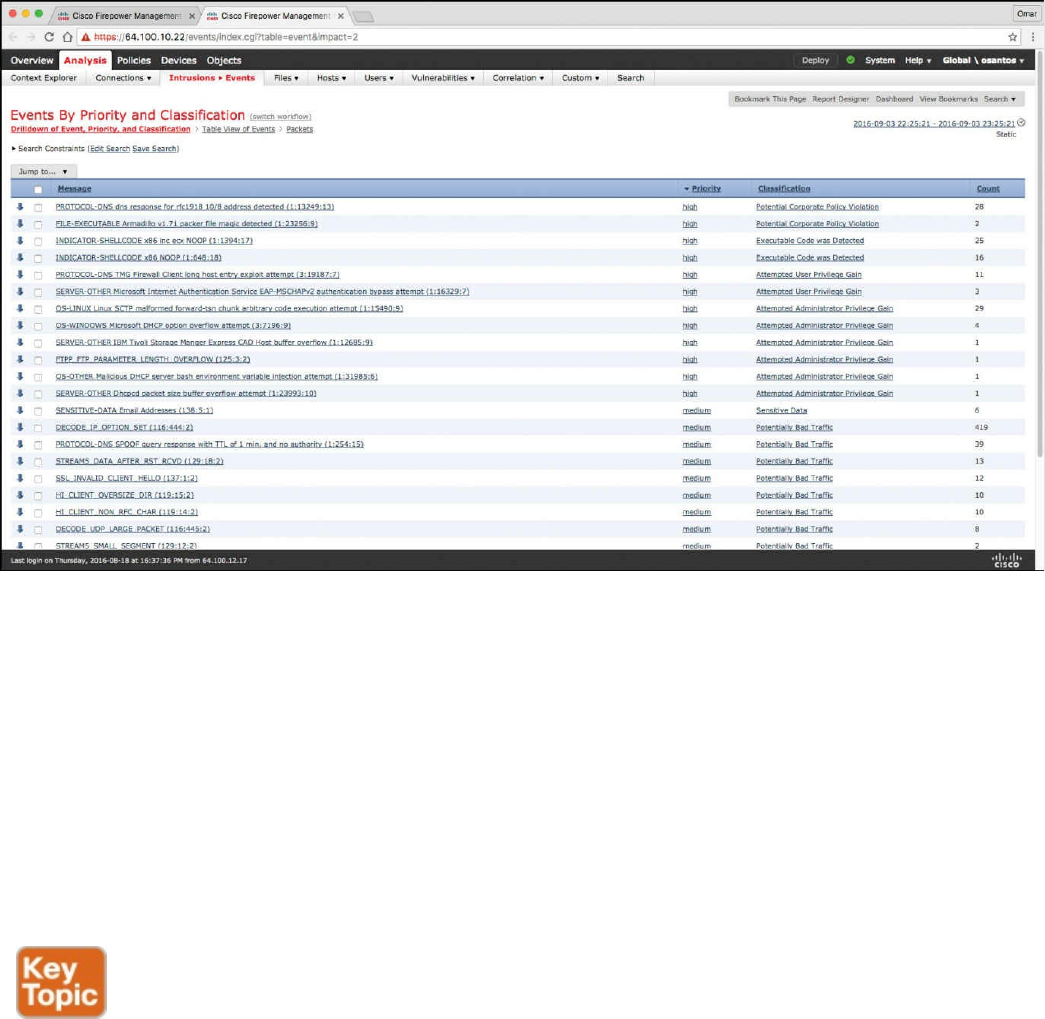

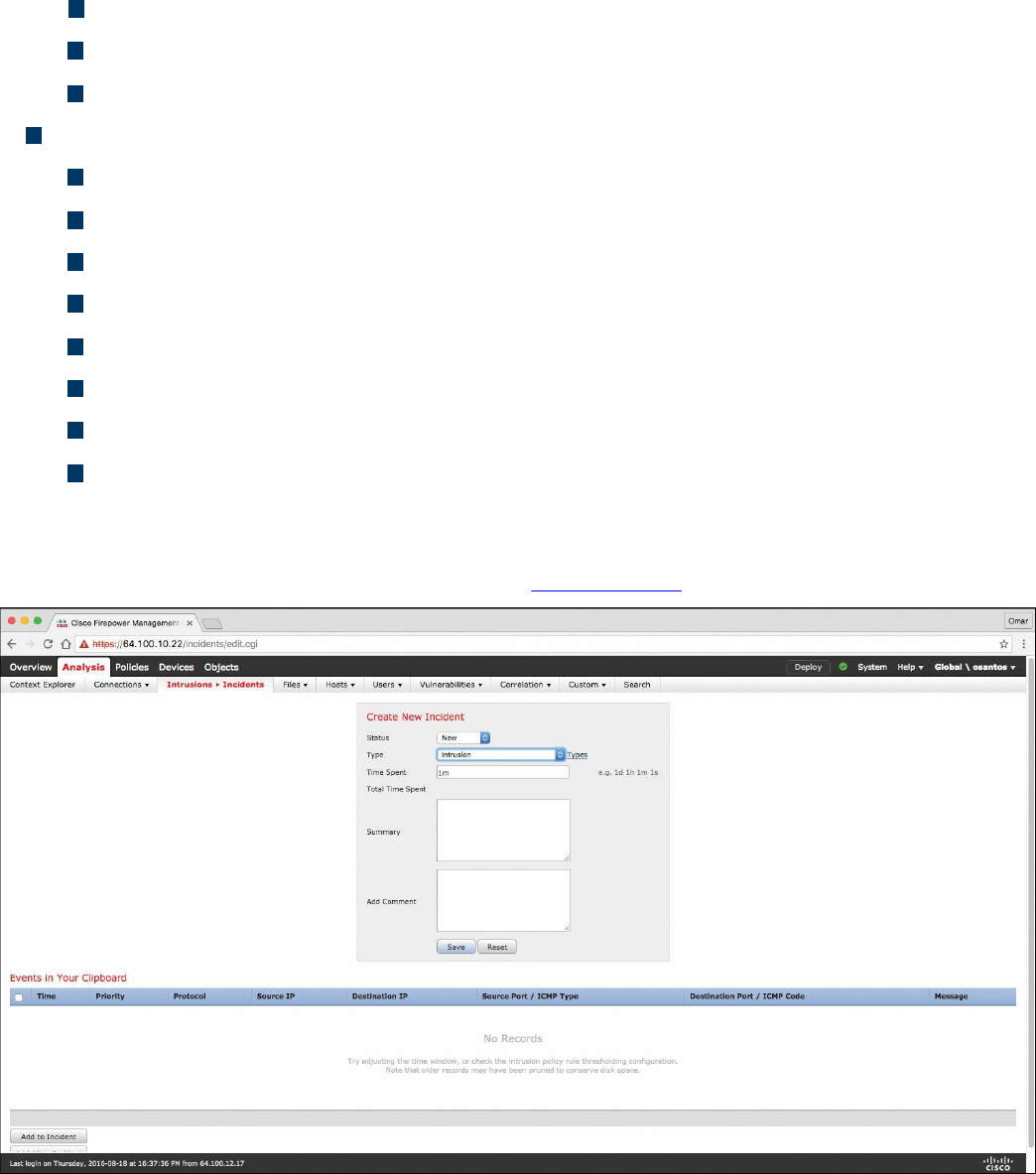

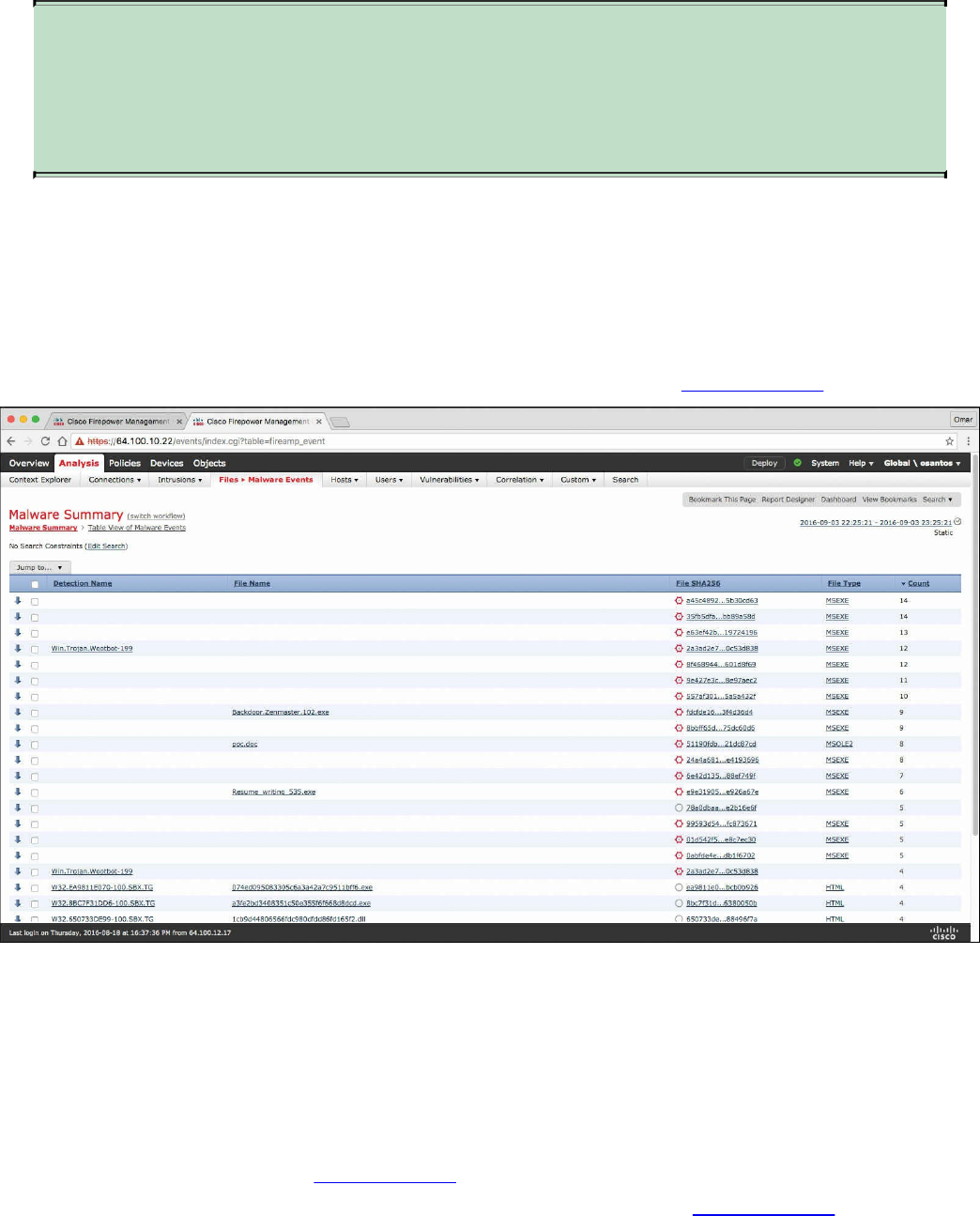

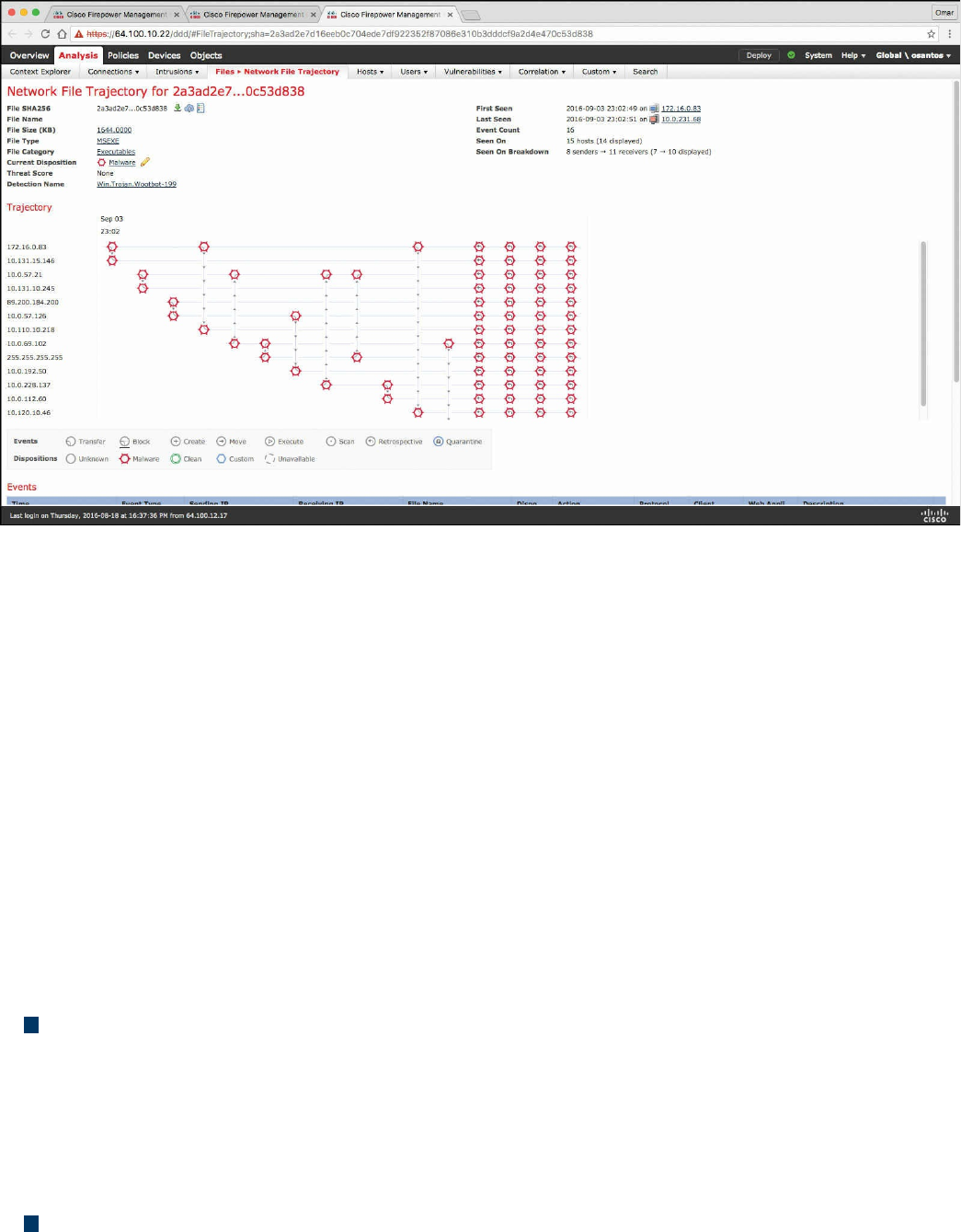

Firepower Management Center

Advance Malware Protection

AMP for Endpoints

AMP for Networks

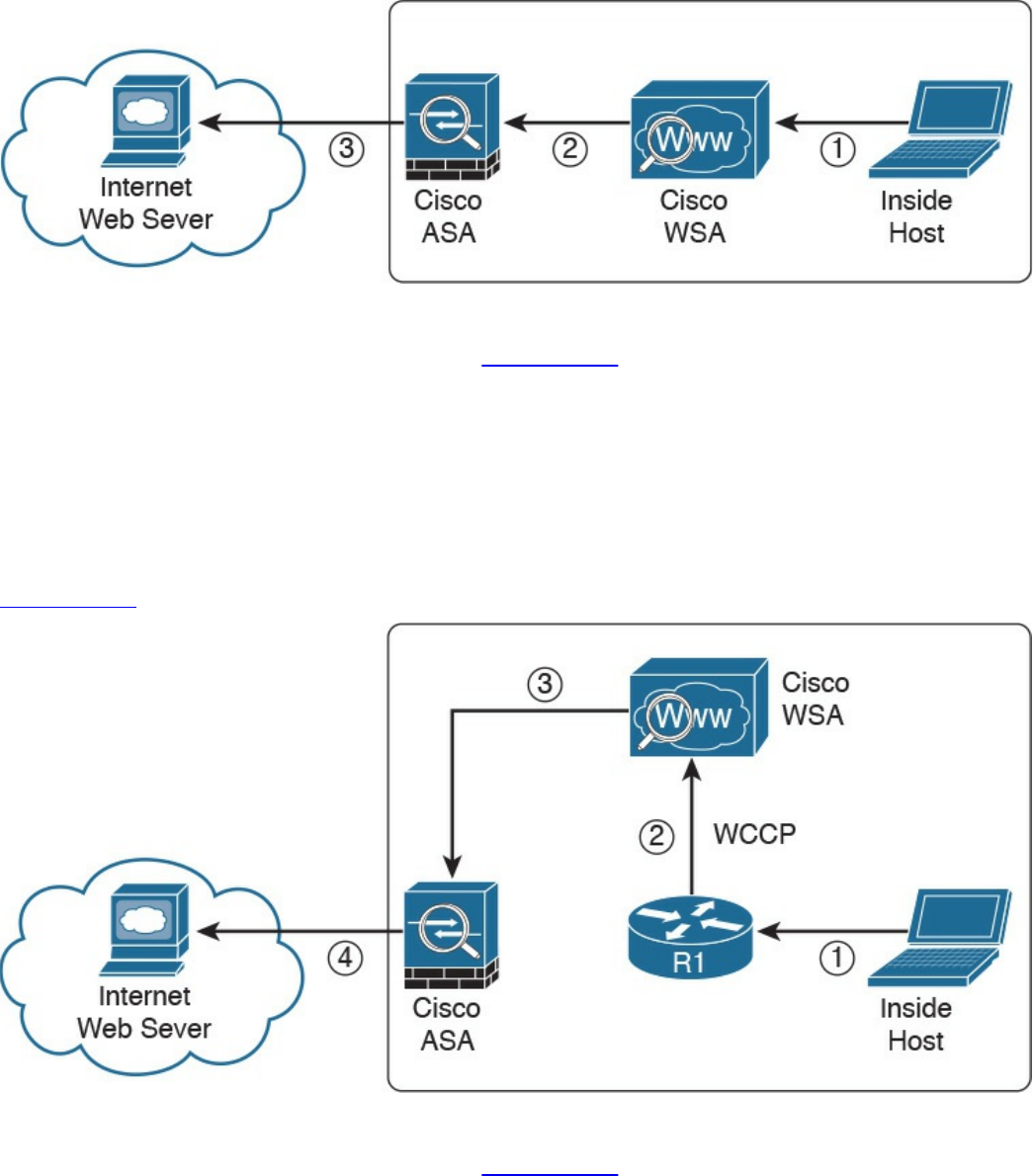

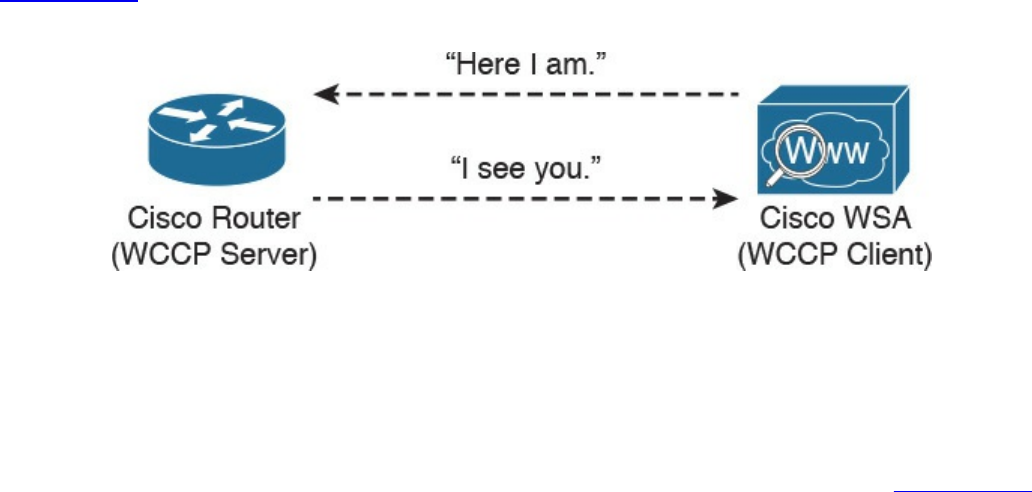

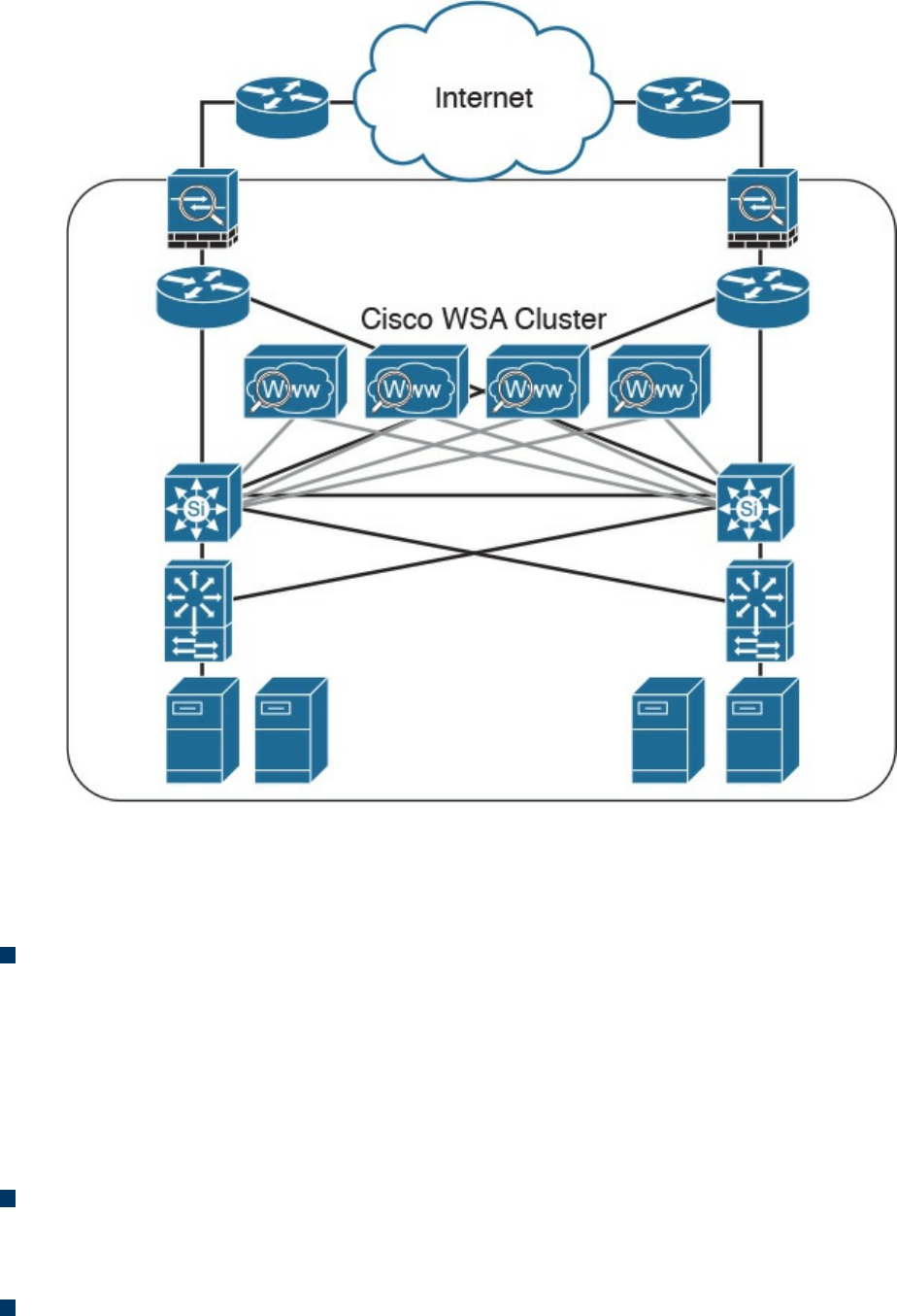

Web Security Appliance

Email Security Appliance

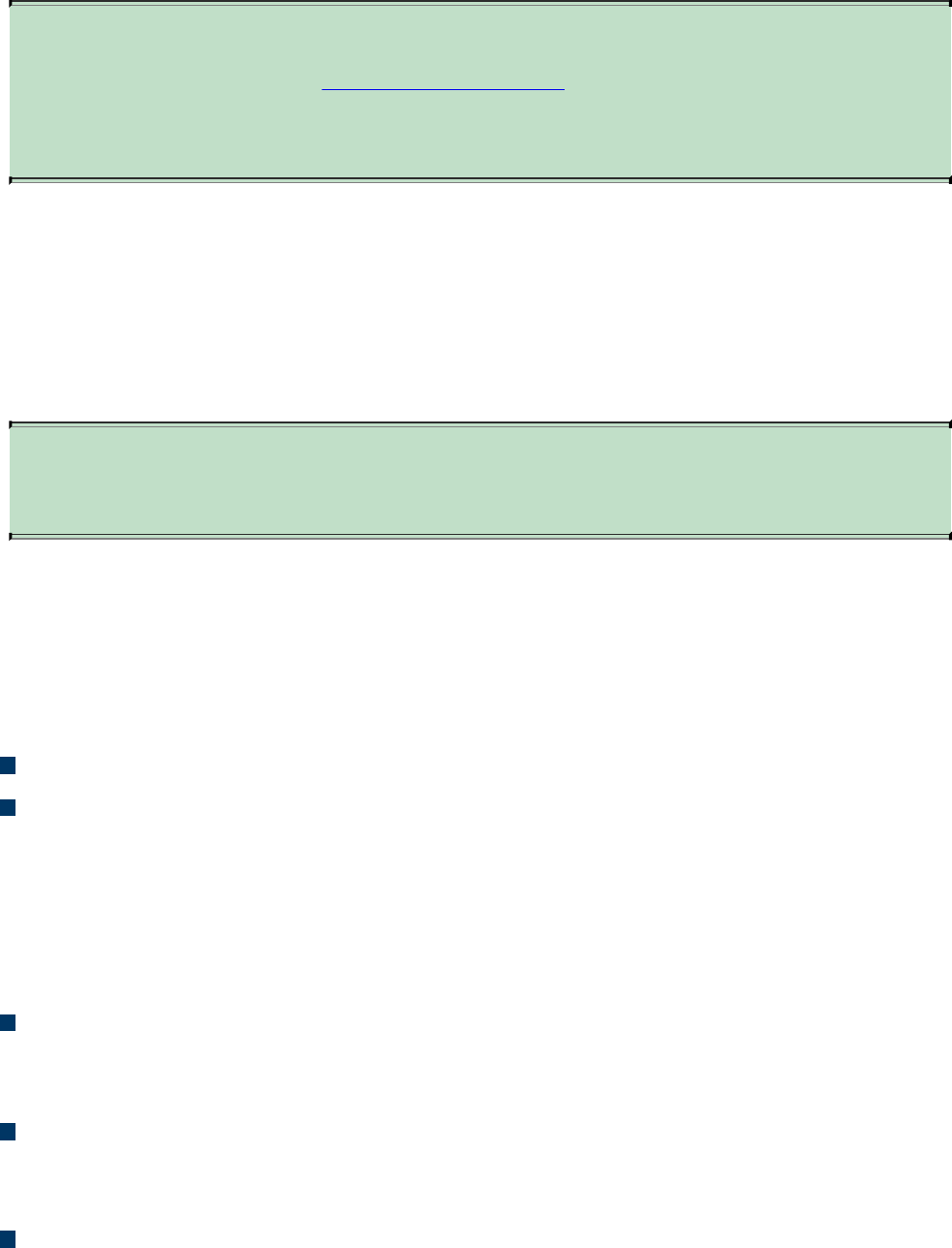

Cisco Security Management Appliance

Cisco Identity Services Engine

Security Cloud-based Solutions

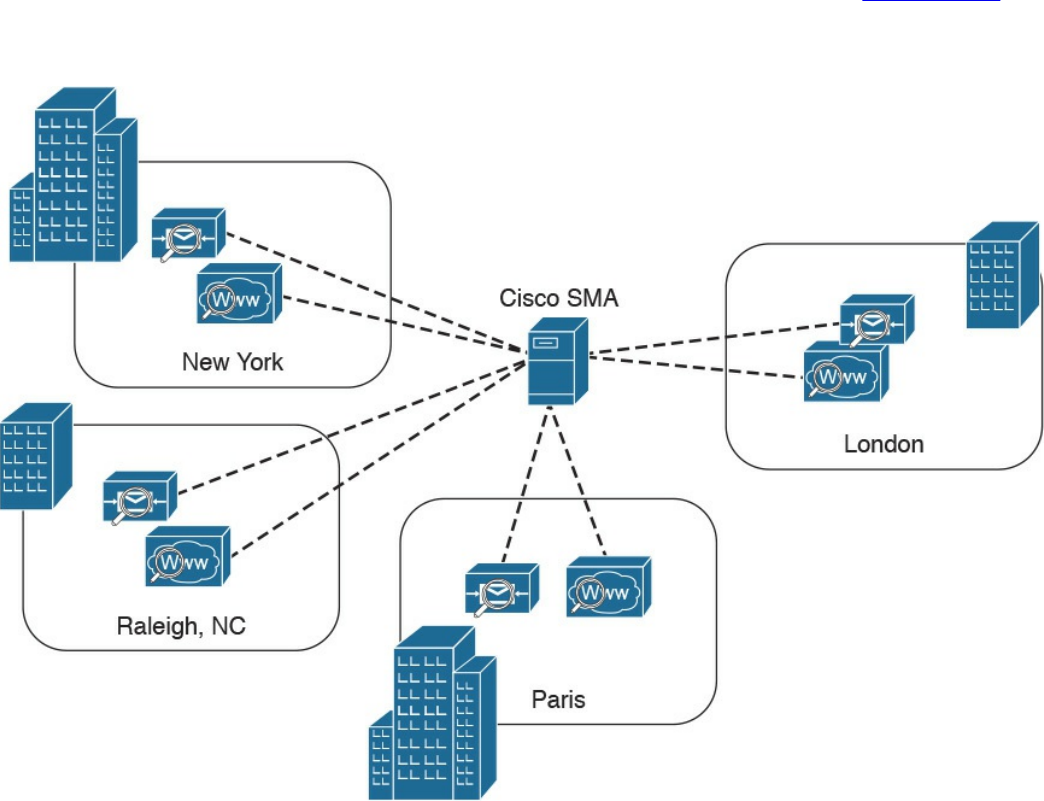

Cisco Cloud Web Security

Cisco Cloud Email Security

Cisco AMP Threat Grid

Cisco Threat Awareness Service

OpenDNS

CloudLock

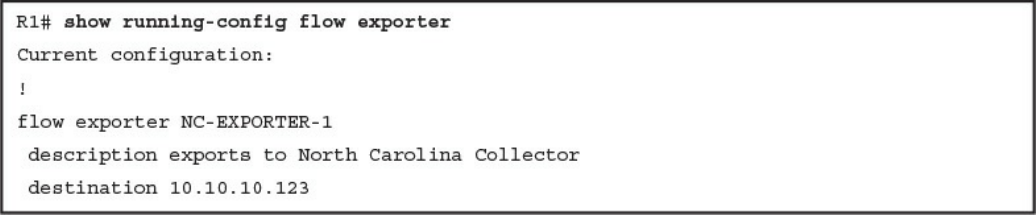

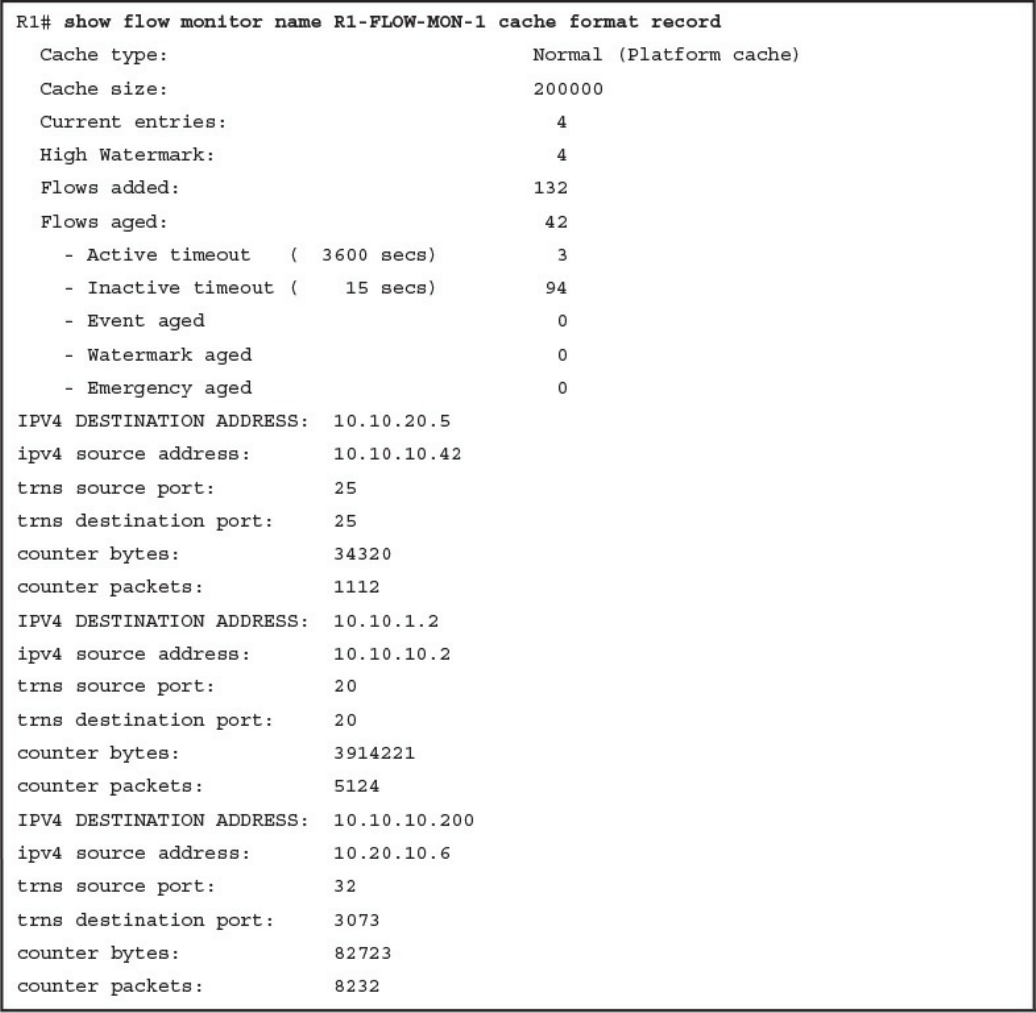

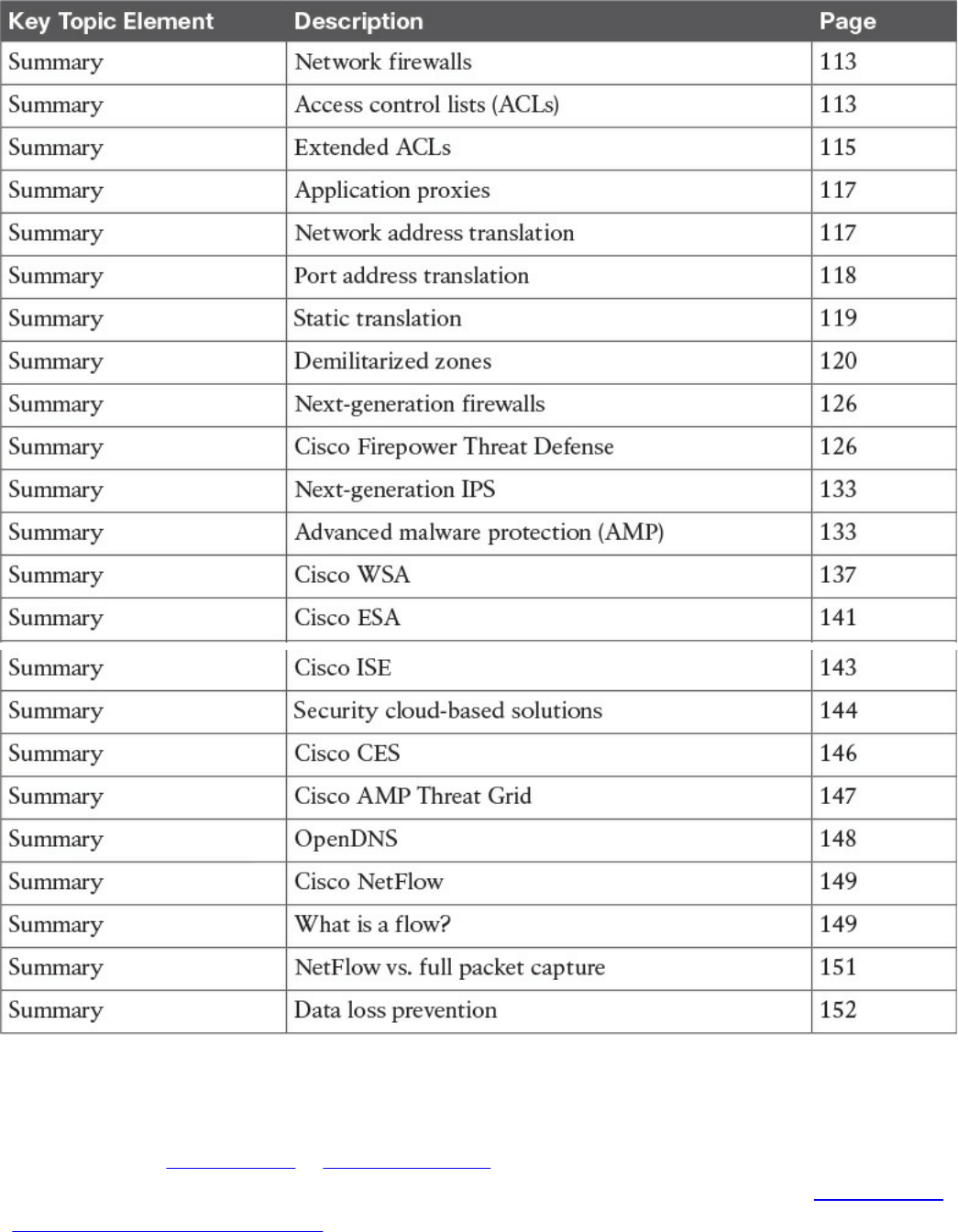

Cisco NetFlow

What Is the Flow in NetFlow?

NetFlow vs. Full Packet Capture

The NetFlow Cache

Data Loss Prevention

Exam Preparation Tasks

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

Part II Security Concepts

Chapter 3 Security Principles

“Do I Know This Already?” Quiz

Foundation Topics

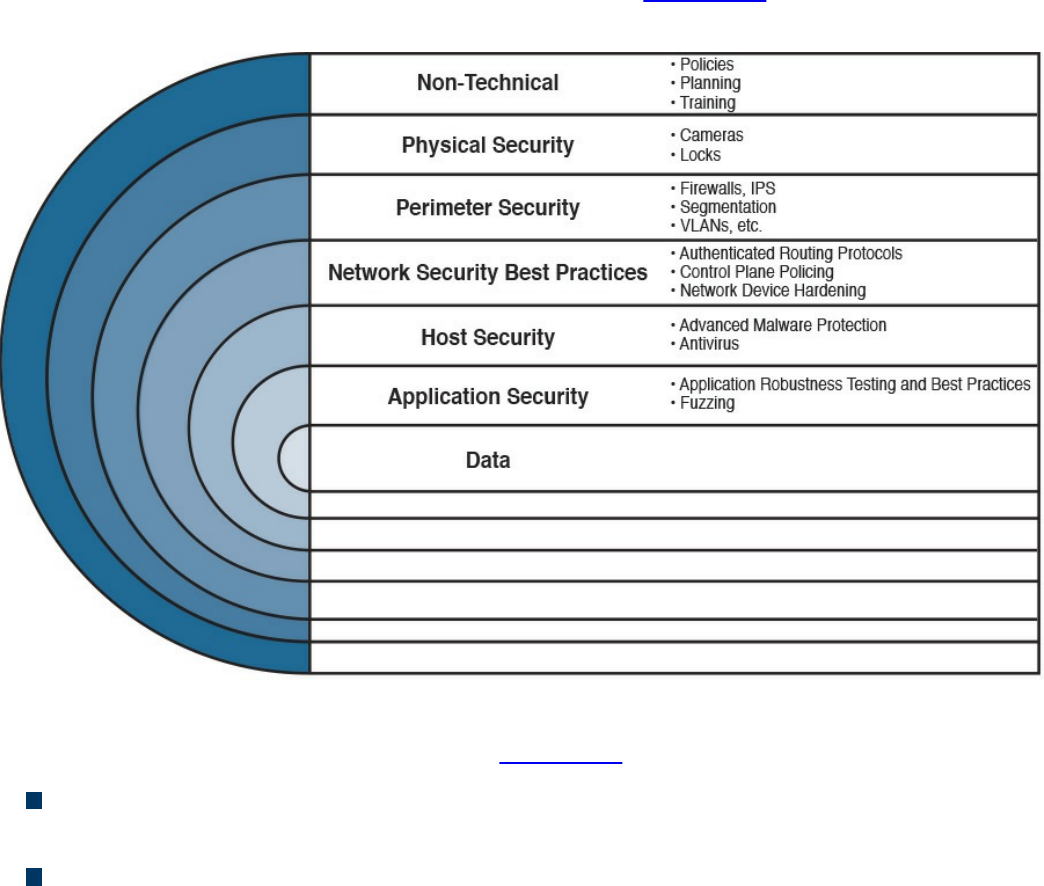

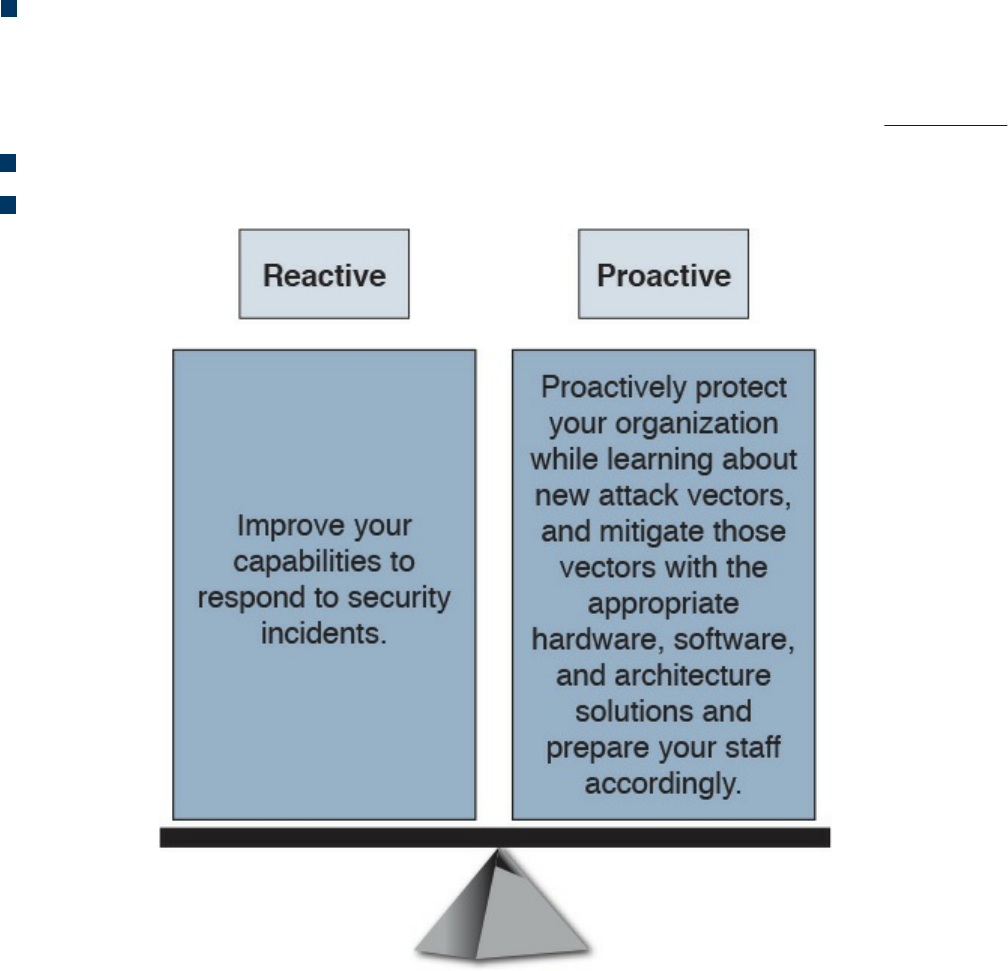

The Principles of the Defense-in-Depth Strategy

What Are Threats, Vulnerabilities, and Exploits?

Vulnerabilities

www.hellodigi.ir

Threats

Threat Actors

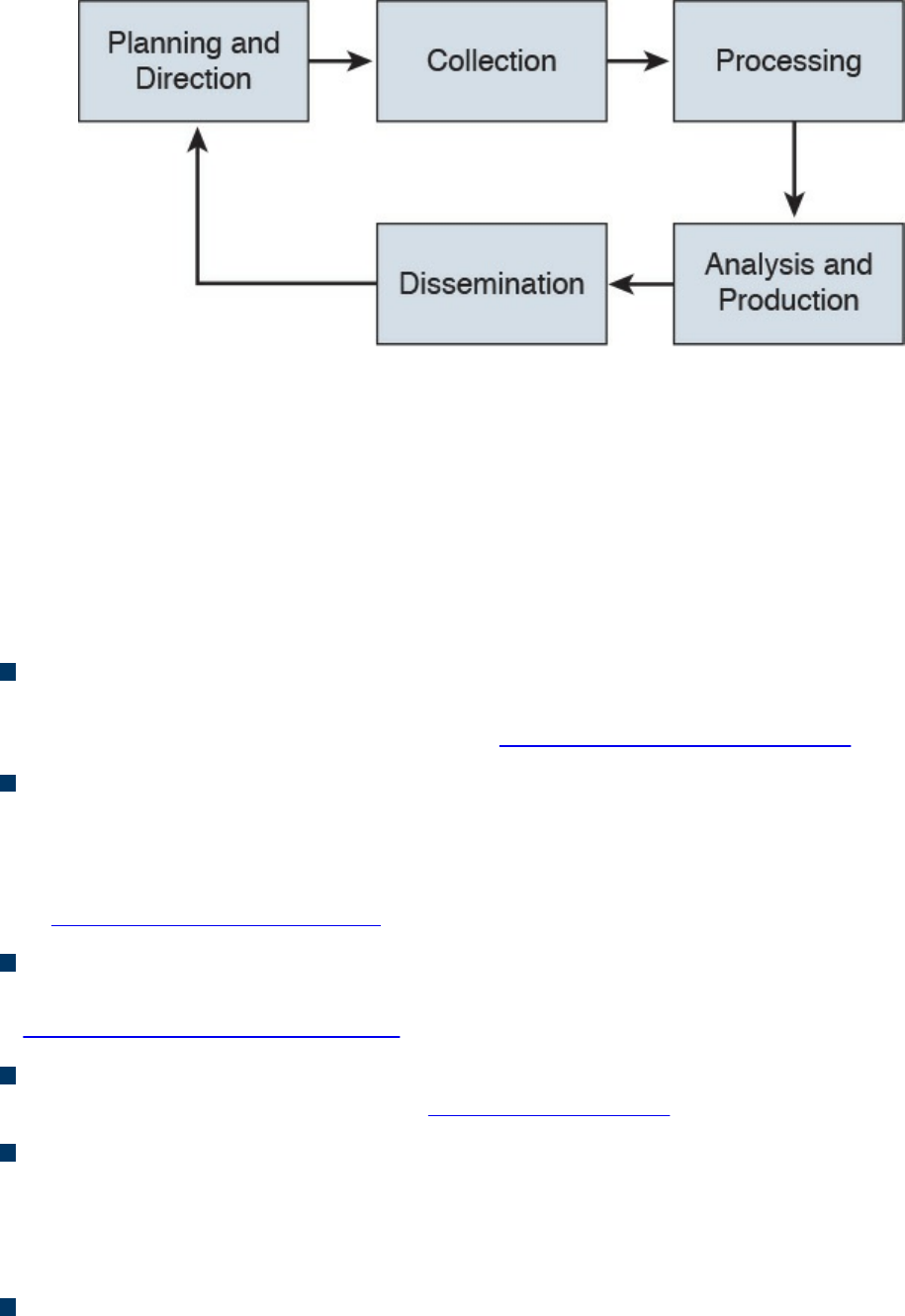

Threat Intelligence

Exploits

Confidentiality, Integrity, and Availability: The CIA Triad

Confidentiality

Integrity

Availability

Risk and Risk Analysis

Personally Identifiable Information and Protected Health Information

PII

PHI

Principle of Least Privilege and Separation of Duties

Principle of Least Privilege

Separation of Duties

Security Operation Centers

Runbook Automation

Forensics

Evidentiary Chain of Custody

Reverse Engineering

Exam Preparation Tasks

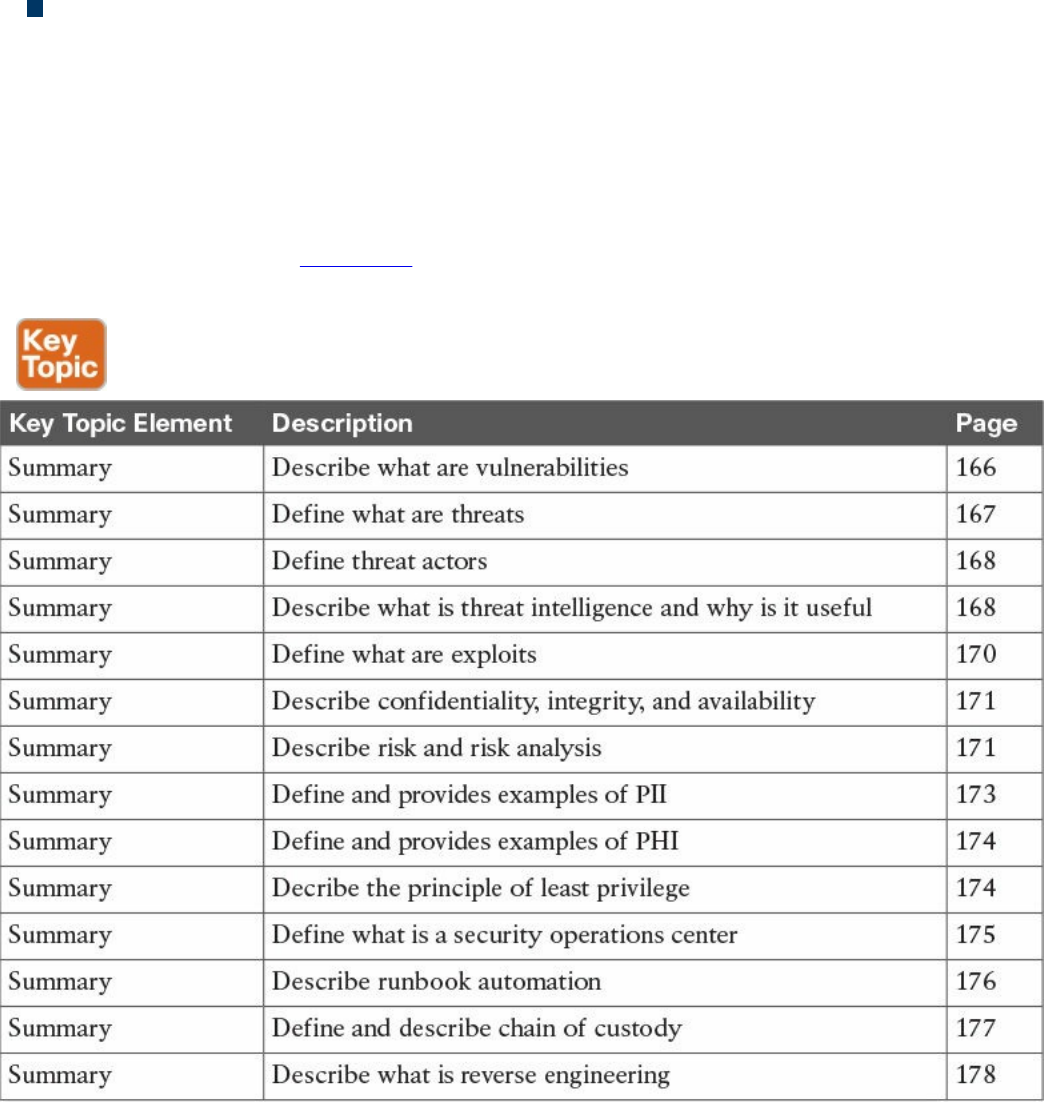

Review All Key Topics

Define Key Terms

Q&A

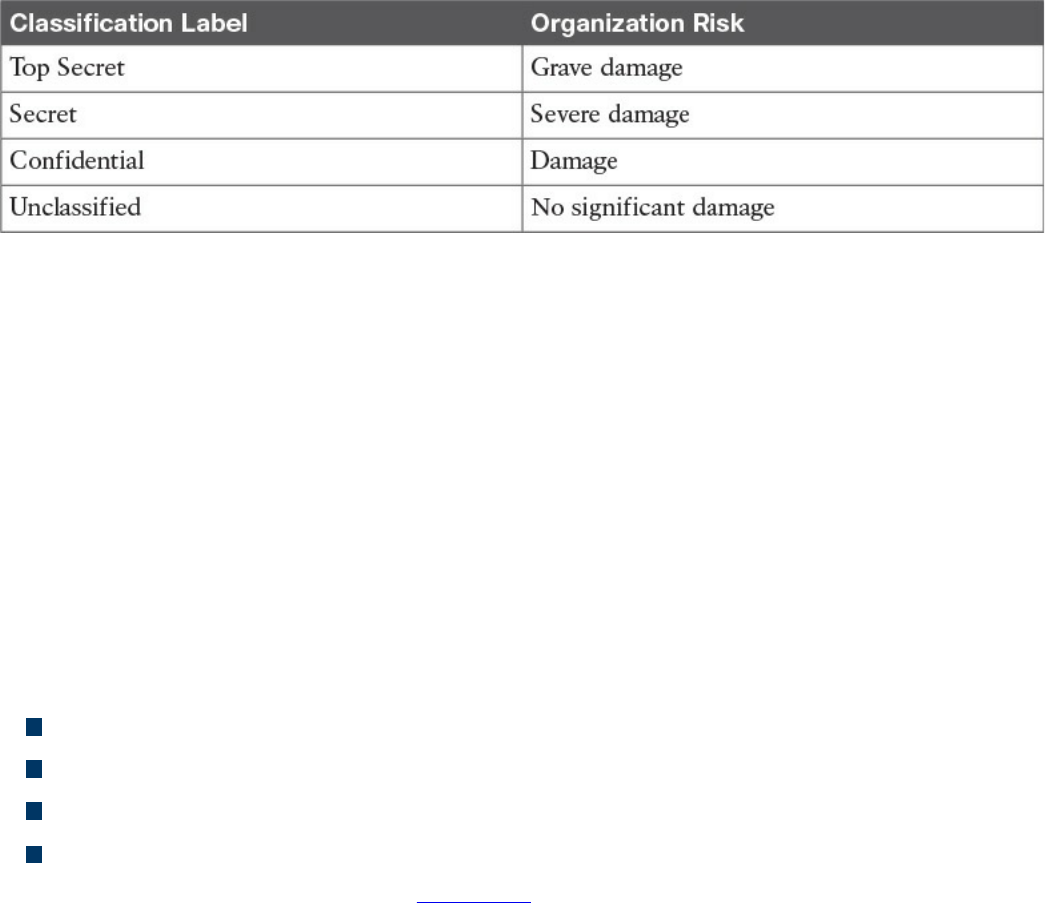

Chapter 4 Introduction to Access Controls

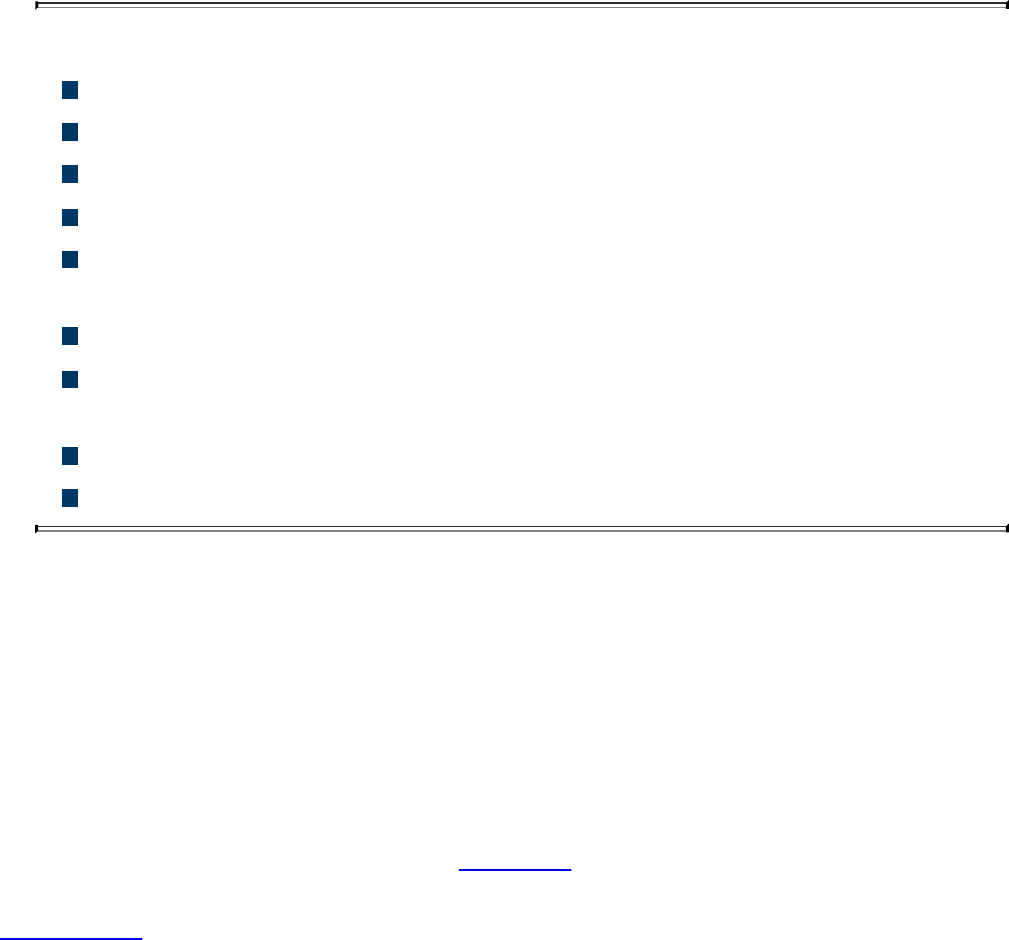

“Do I Know This Already?” Quiz

Foundation Topics

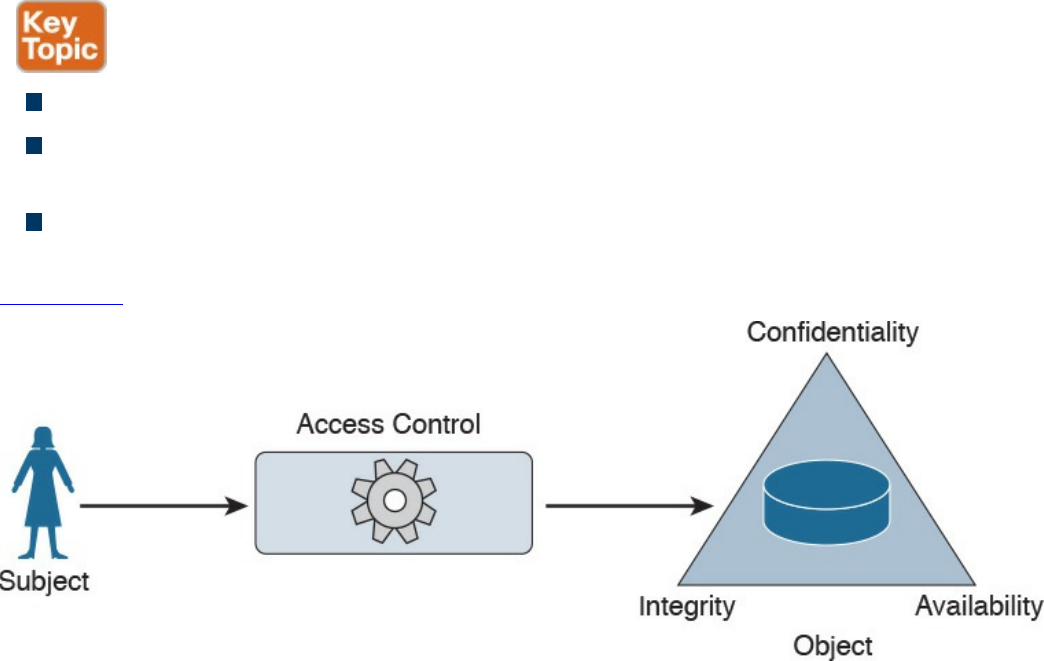

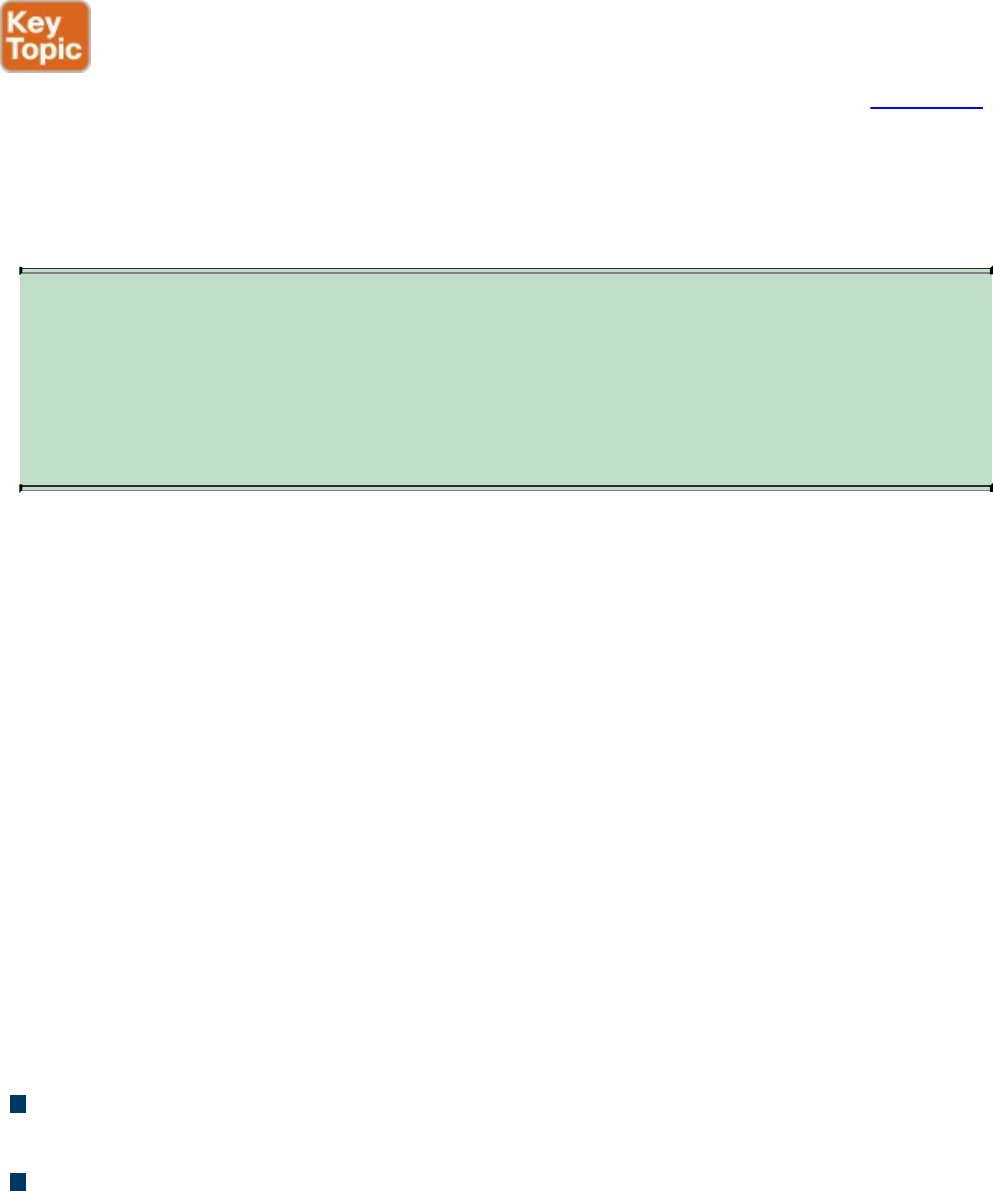

Information Security Principles

Subject and Object Definition

Access Control Fundamentals

Identification

Authentication

www.hellodigi.ir

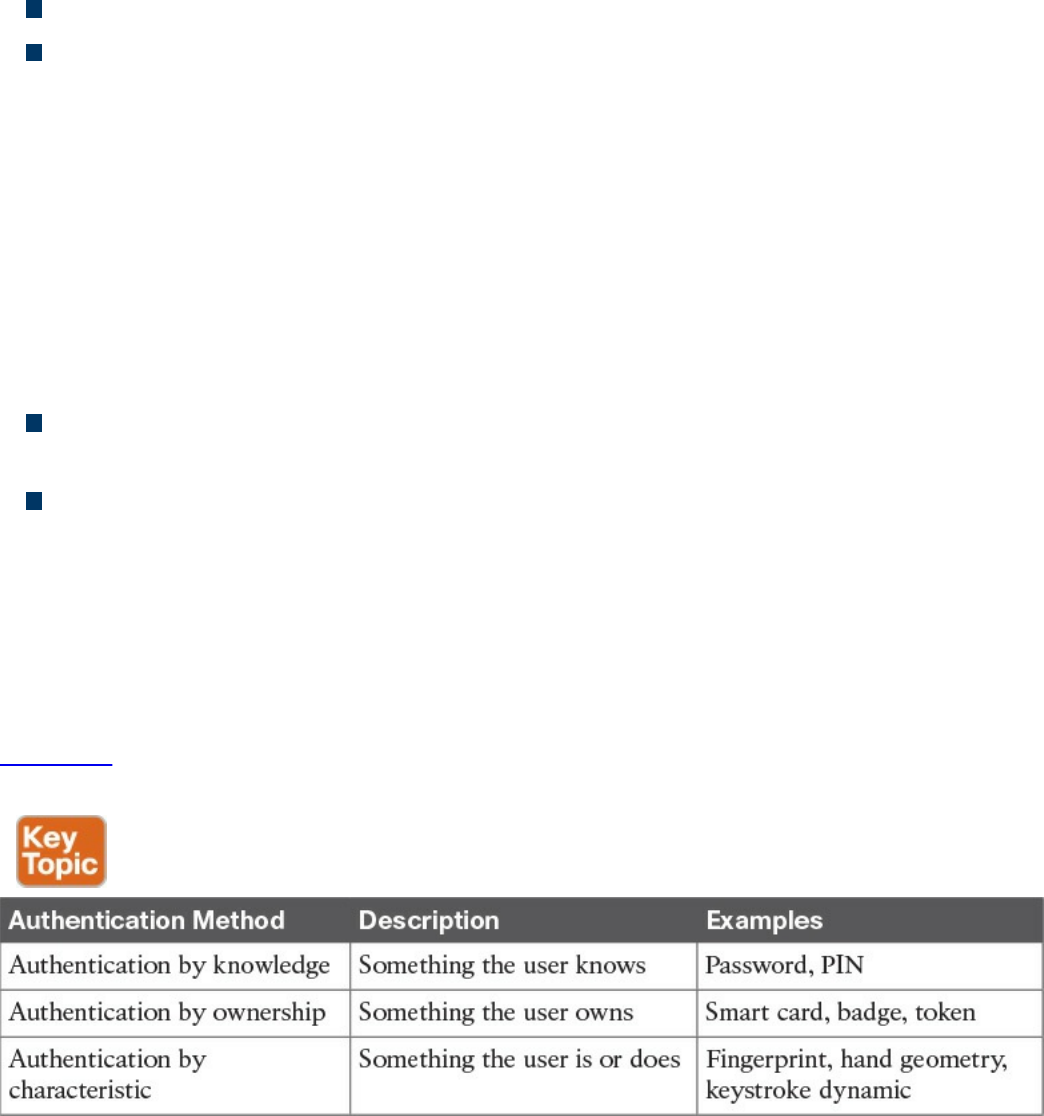

Authentication by Knowledge

Authentication by Ownership

Authentication by Characteristic

Multifactor Authentication

Authorization

Accounting

Access Control Fundamentals: Summary

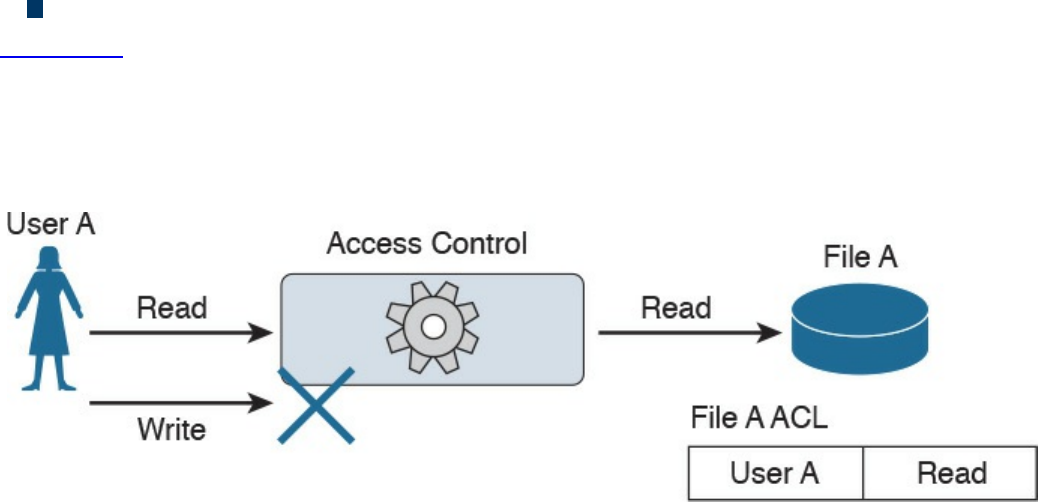

Access Control Process

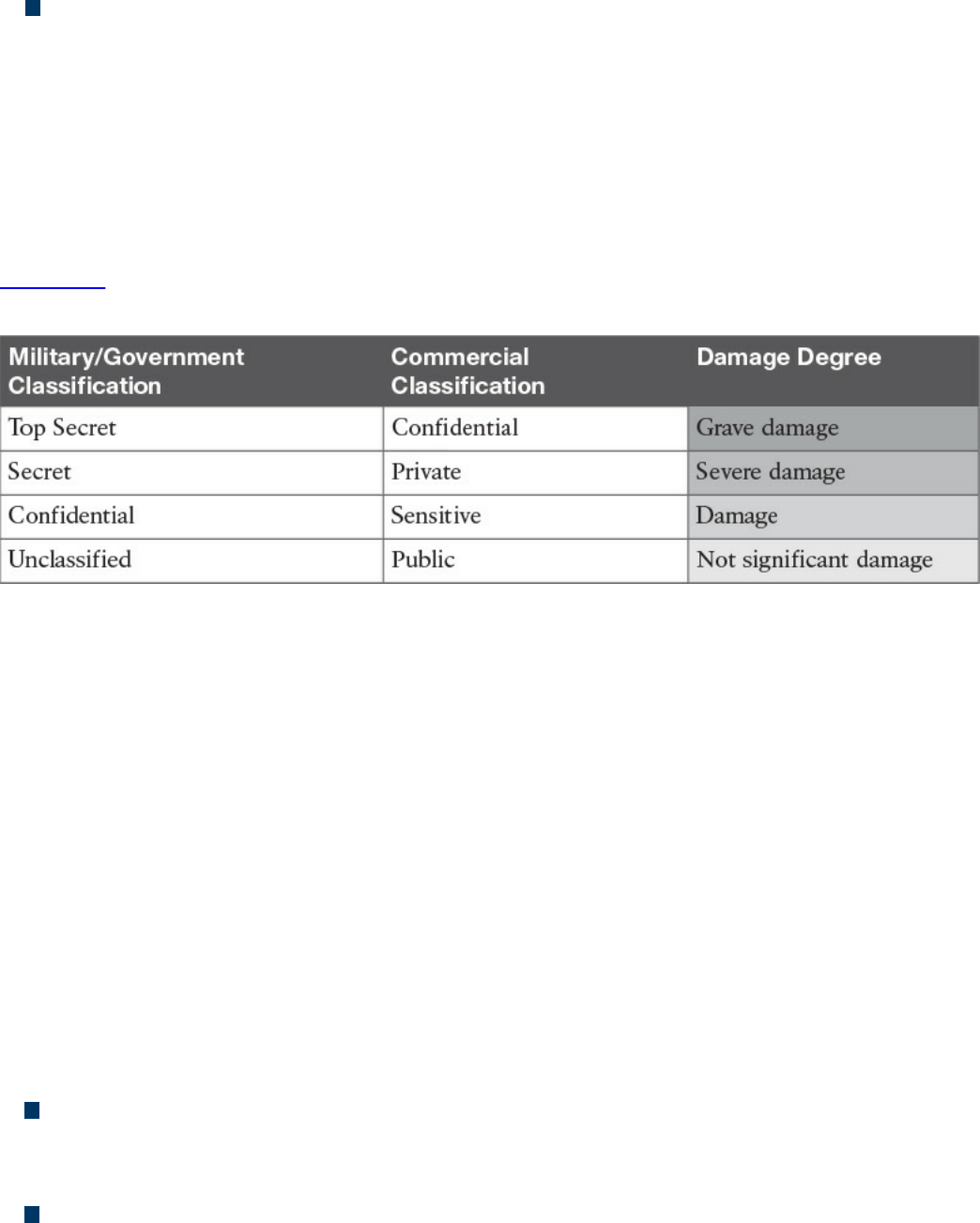

Asset Classification

Asset Marking

Access Control Policy

Data Disposal

Information Security Roles and Responsibilities

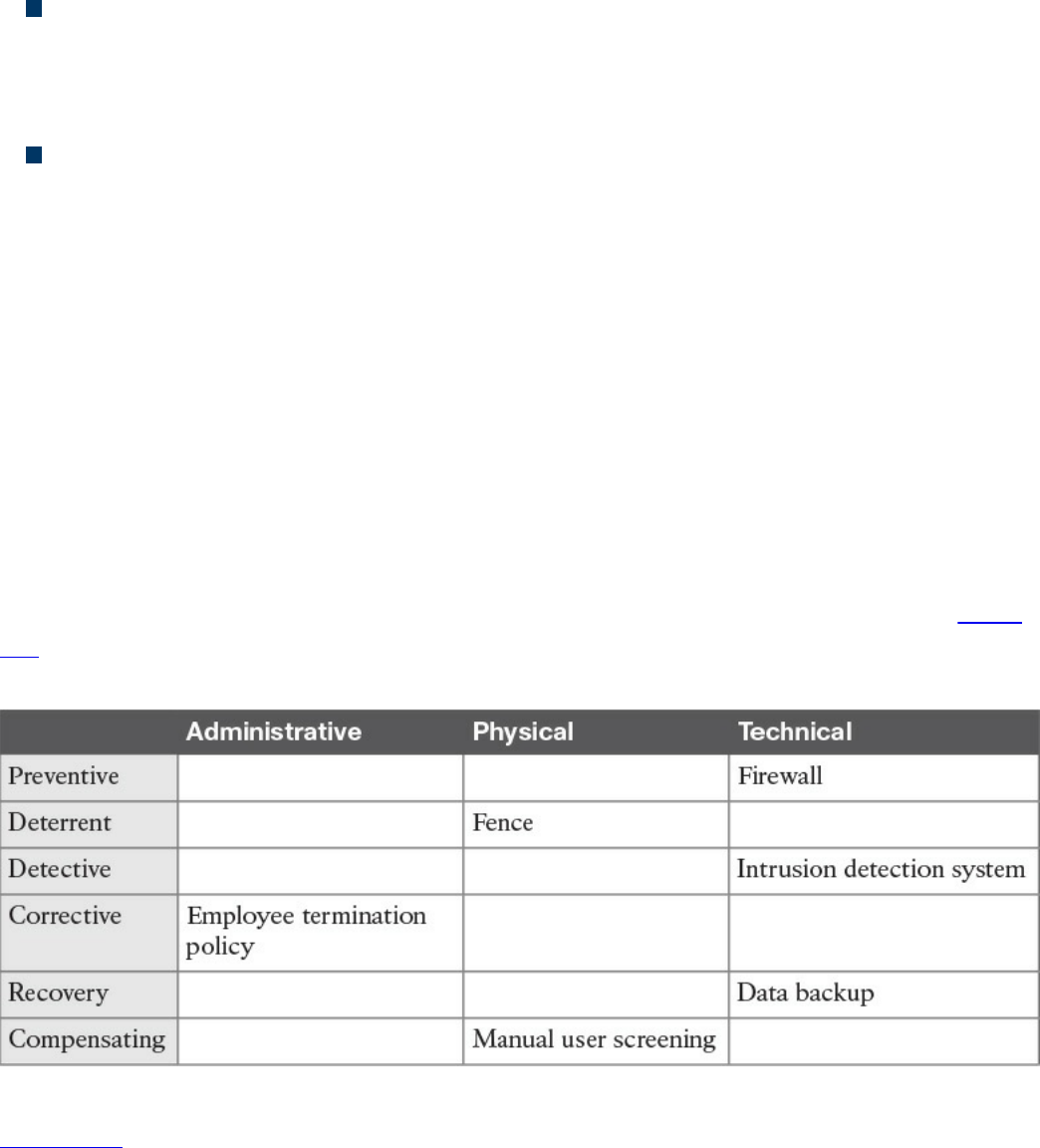

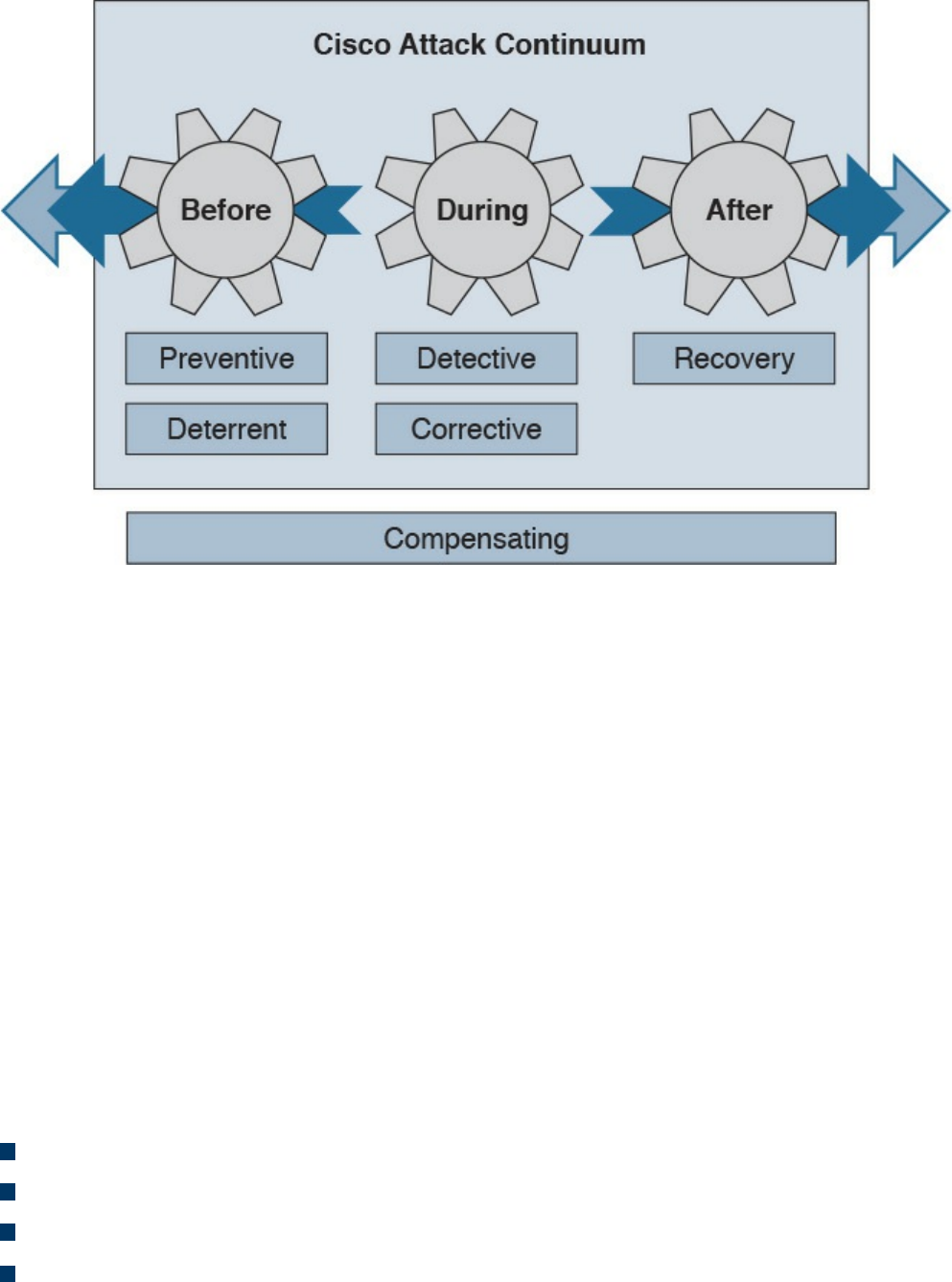

Access Control Types

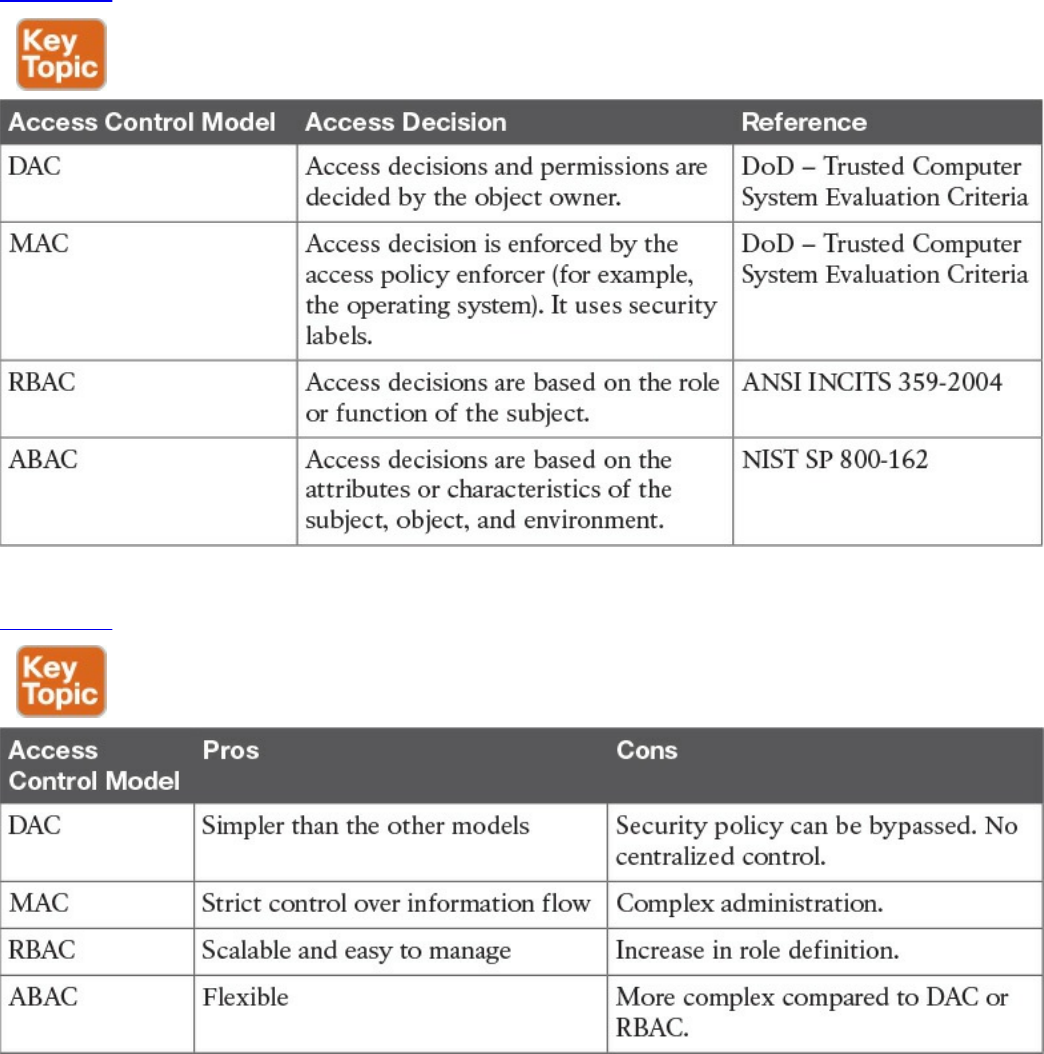

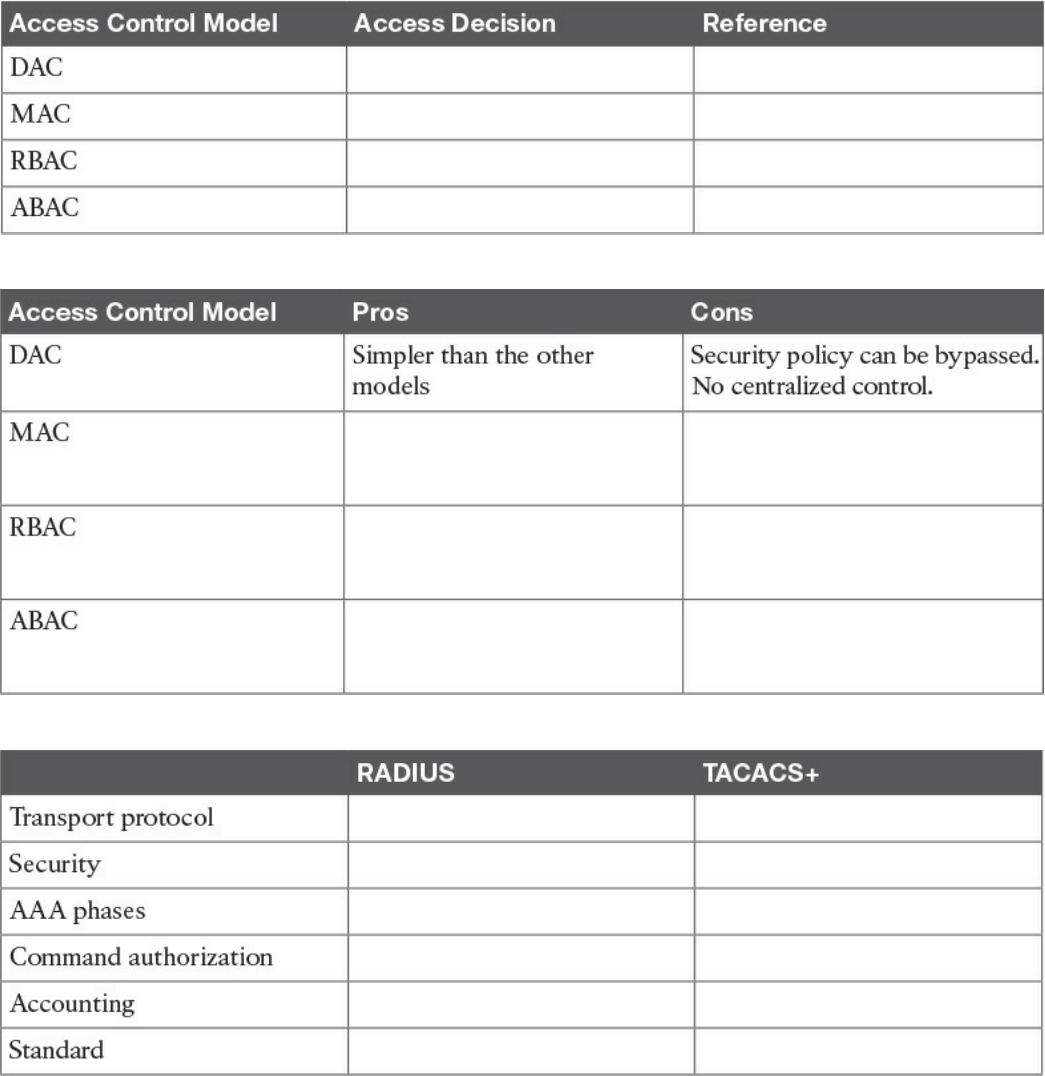

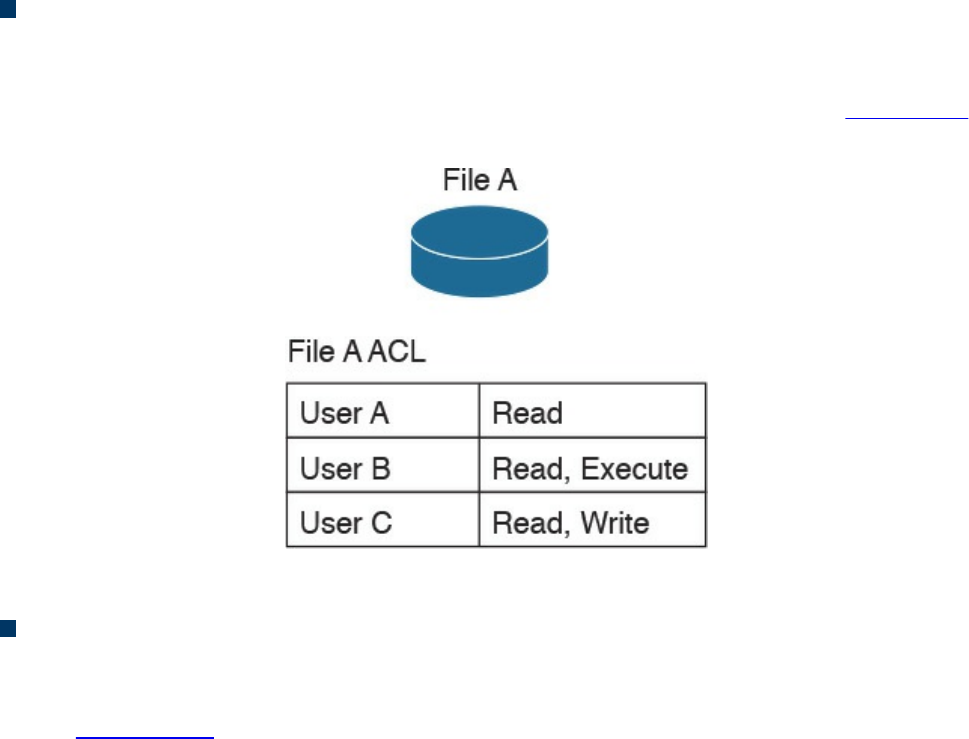

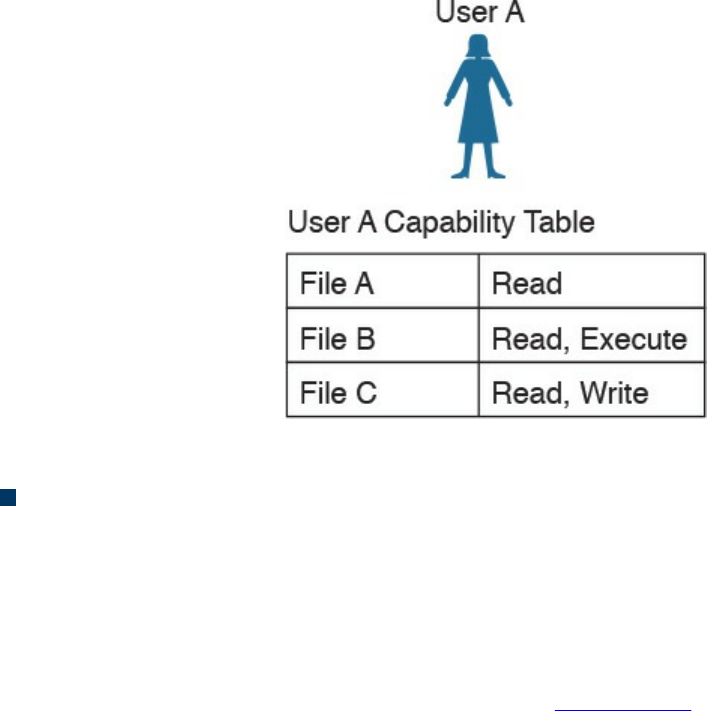

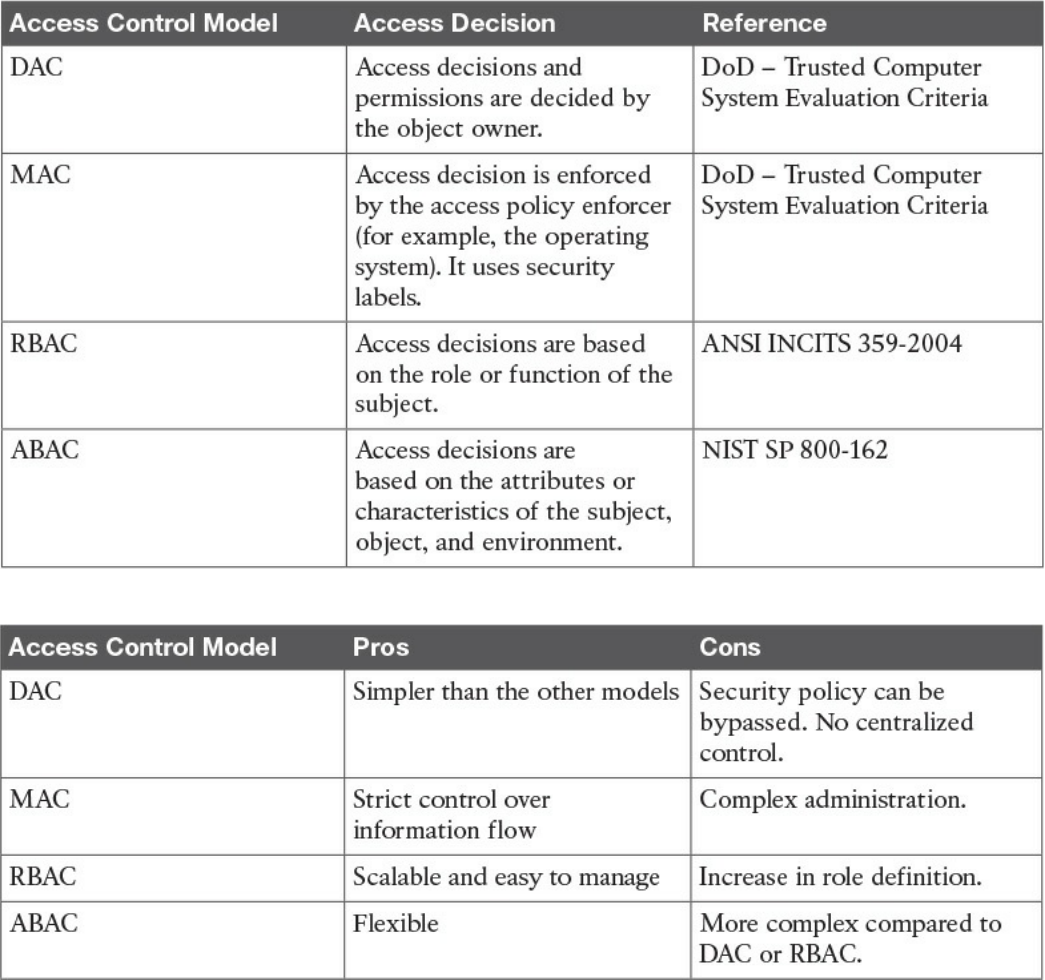

Access Control Models

Discretionary Access Control

Mandatory Access Control

Role-Based Access Control

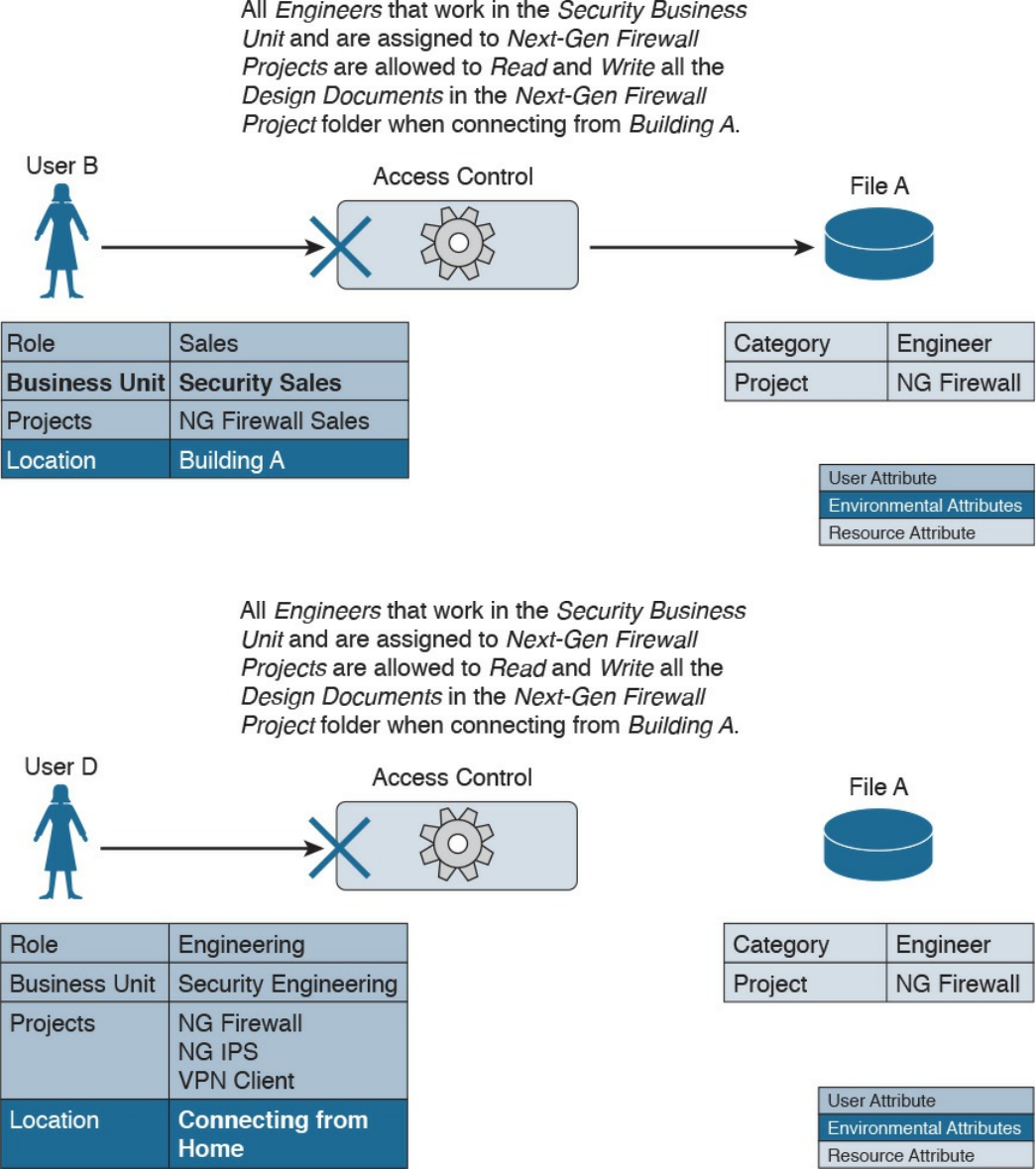

Attribute-Based Access Control

Access Control Mechanisms

Identity and Access Control Implementation

Authentication, Authorization, and Accounting Protocols

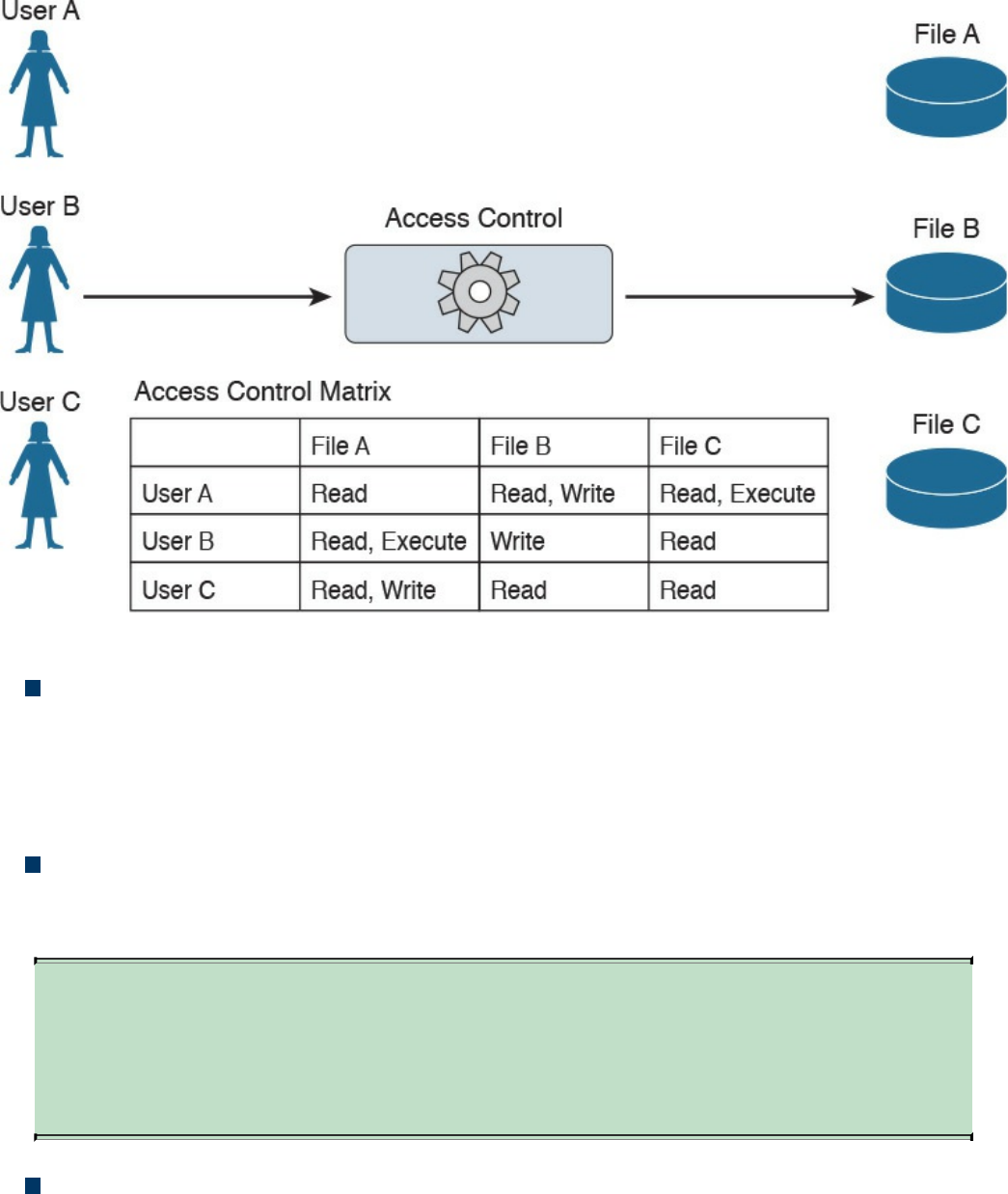

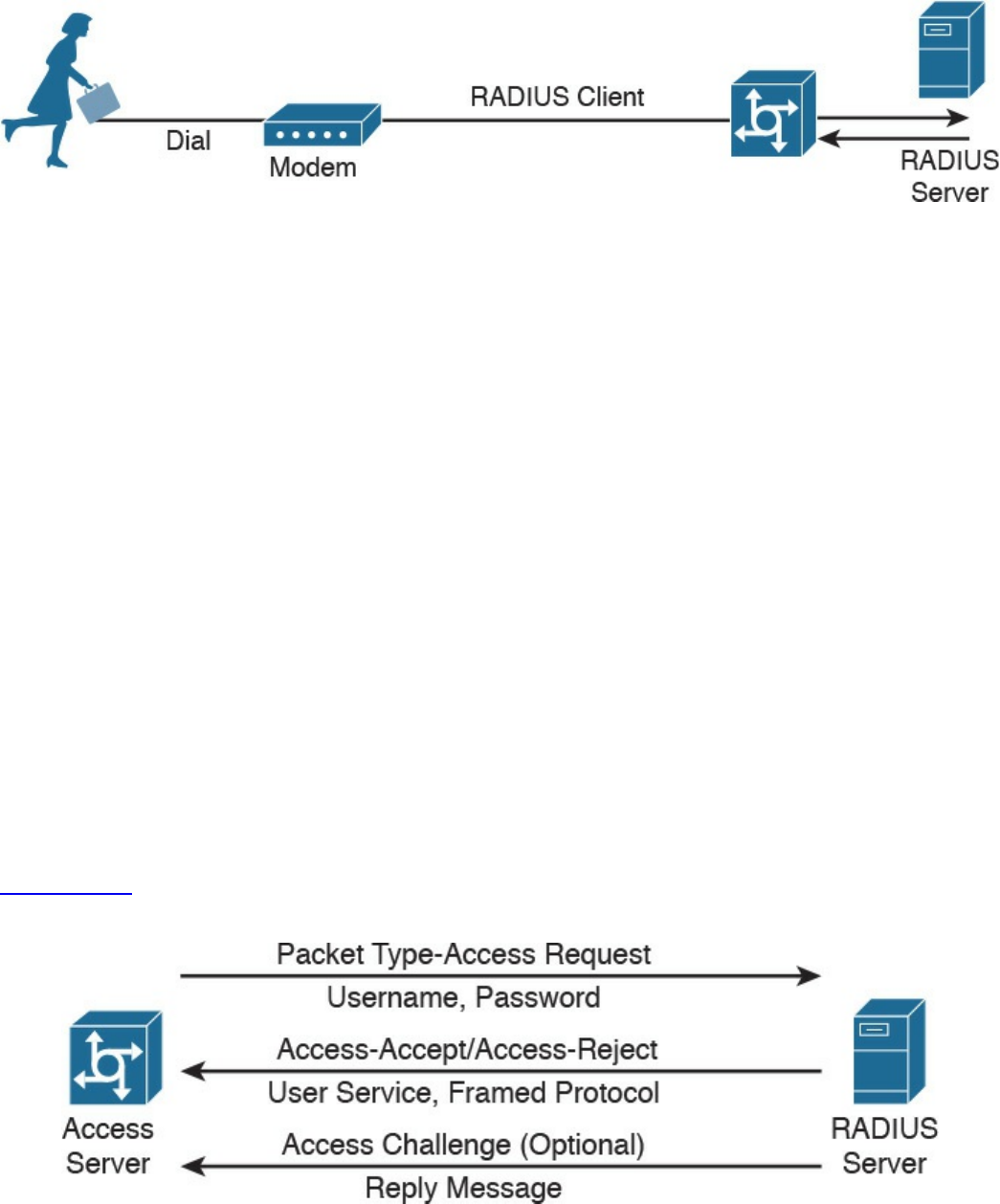

RADIUS

TACACS+

Diameter

Port-Based Access Control

Port Security

802.1x

Network Access Control List and Firewalling

VLAN Map

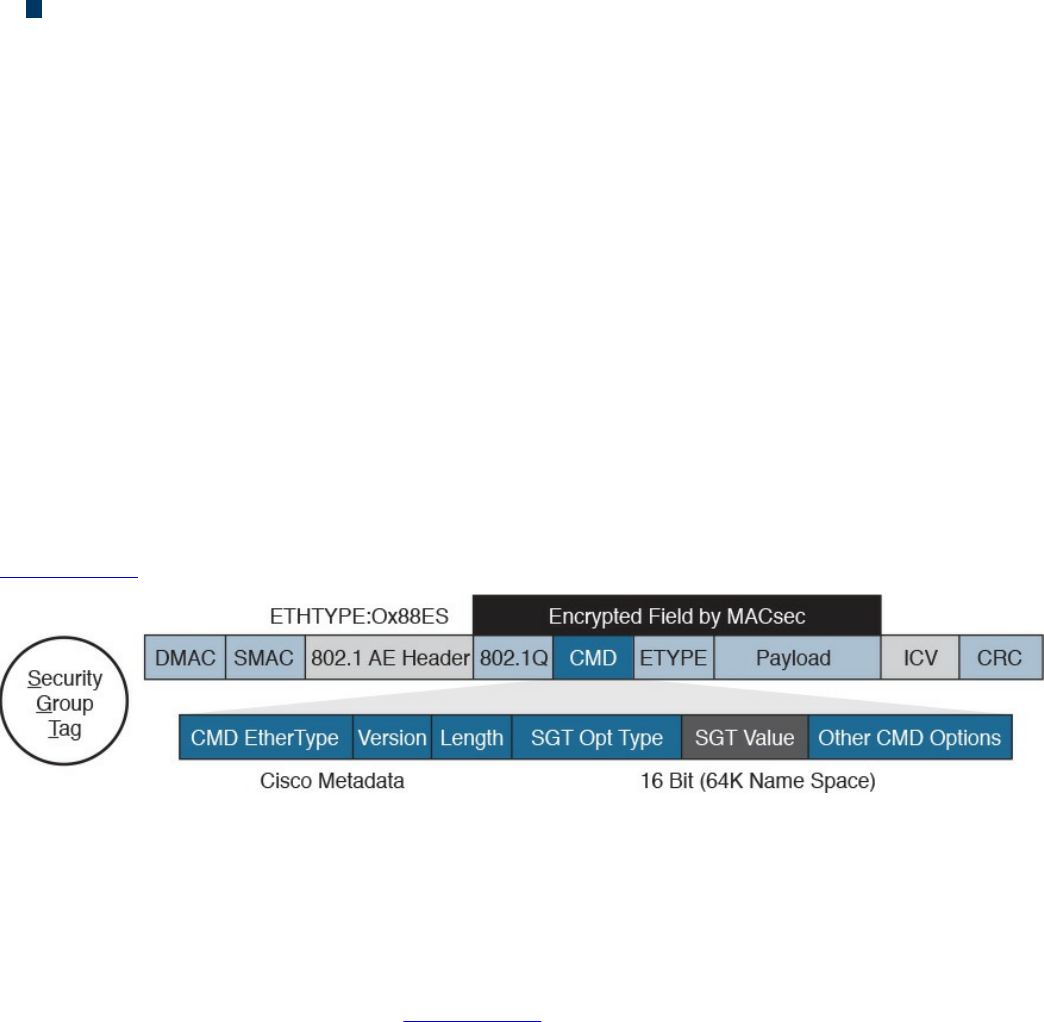

Security Group–Based ACL

Downloadable ACL

www.hellodigi.ir

Firewalling

Identity Management and Profiling

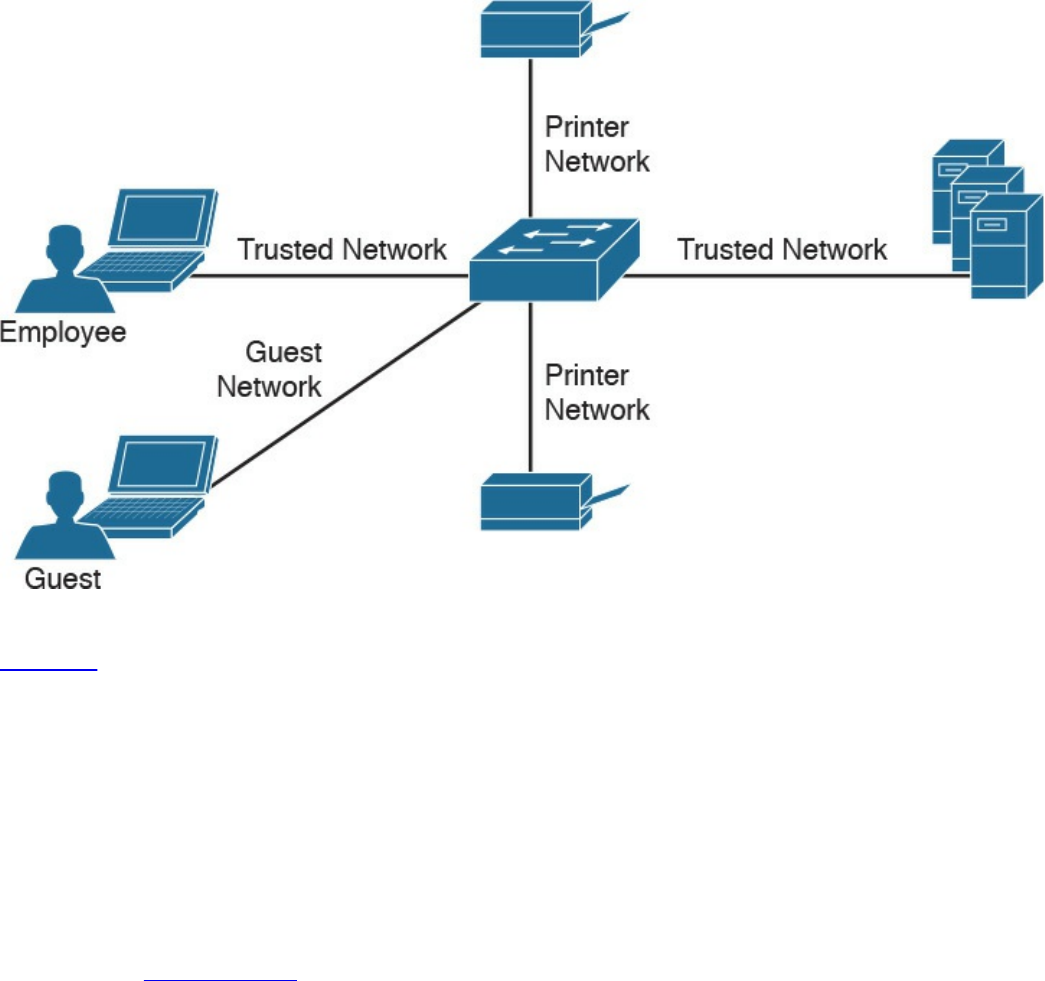

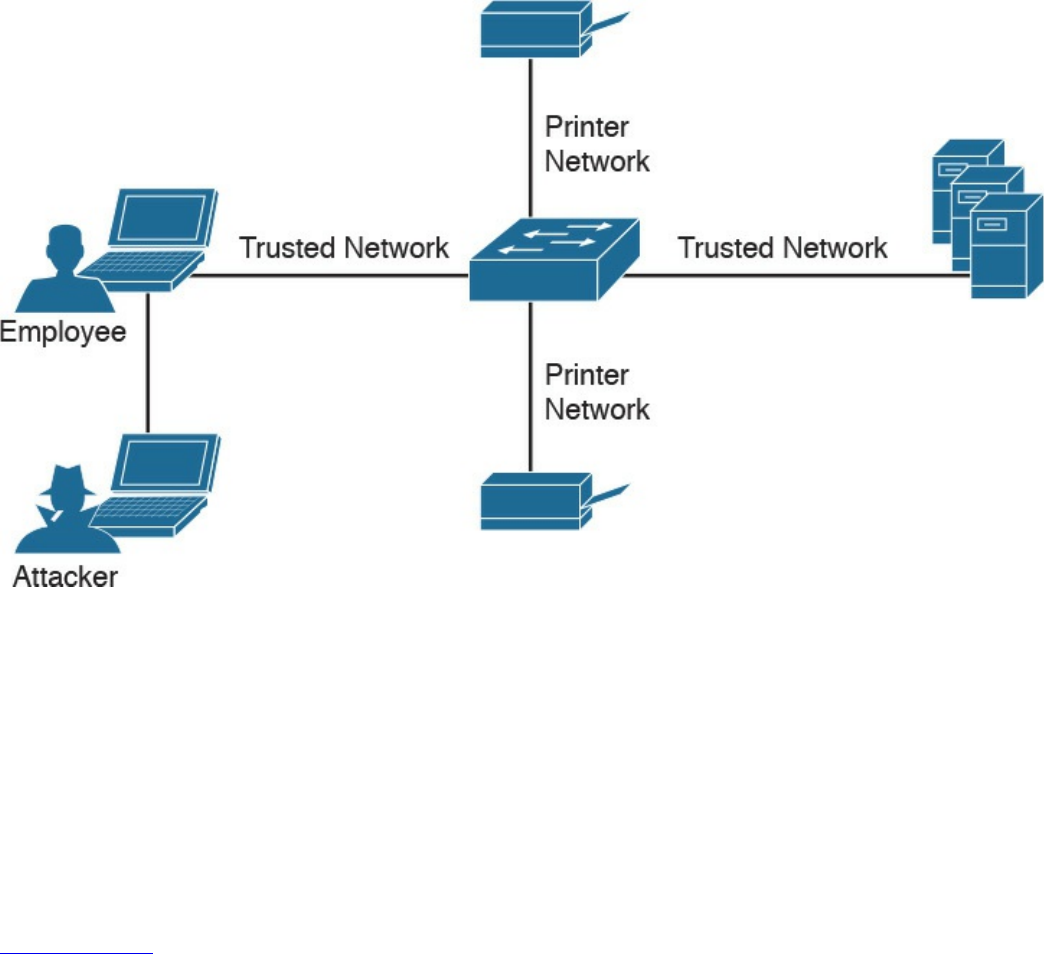

Network Segmentation

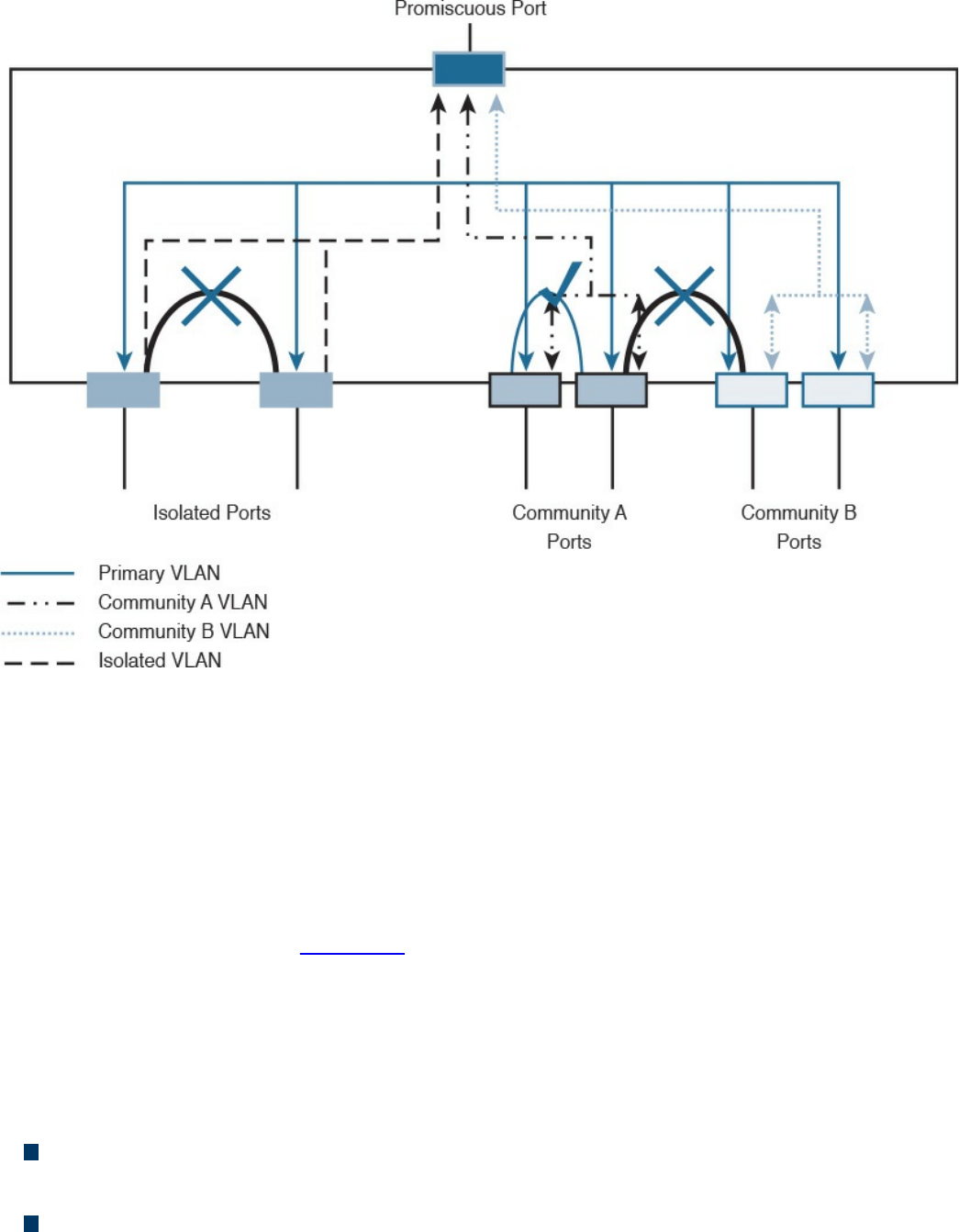

Network Segmentation Through VLAN

Firewall DMZ

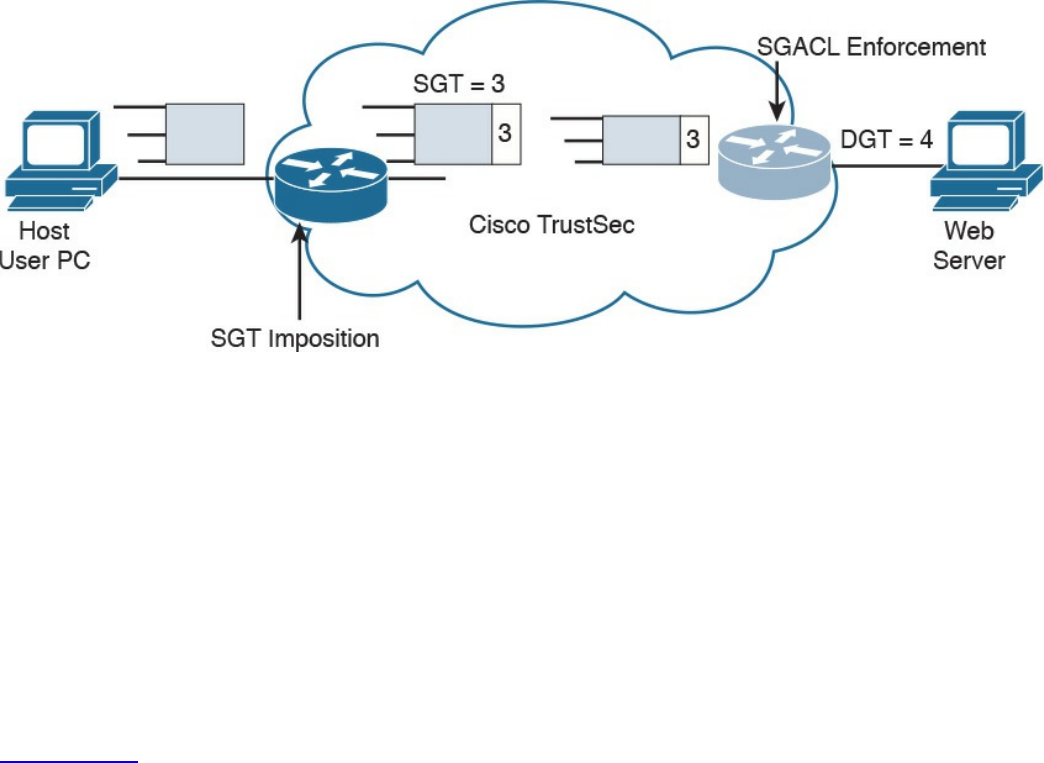

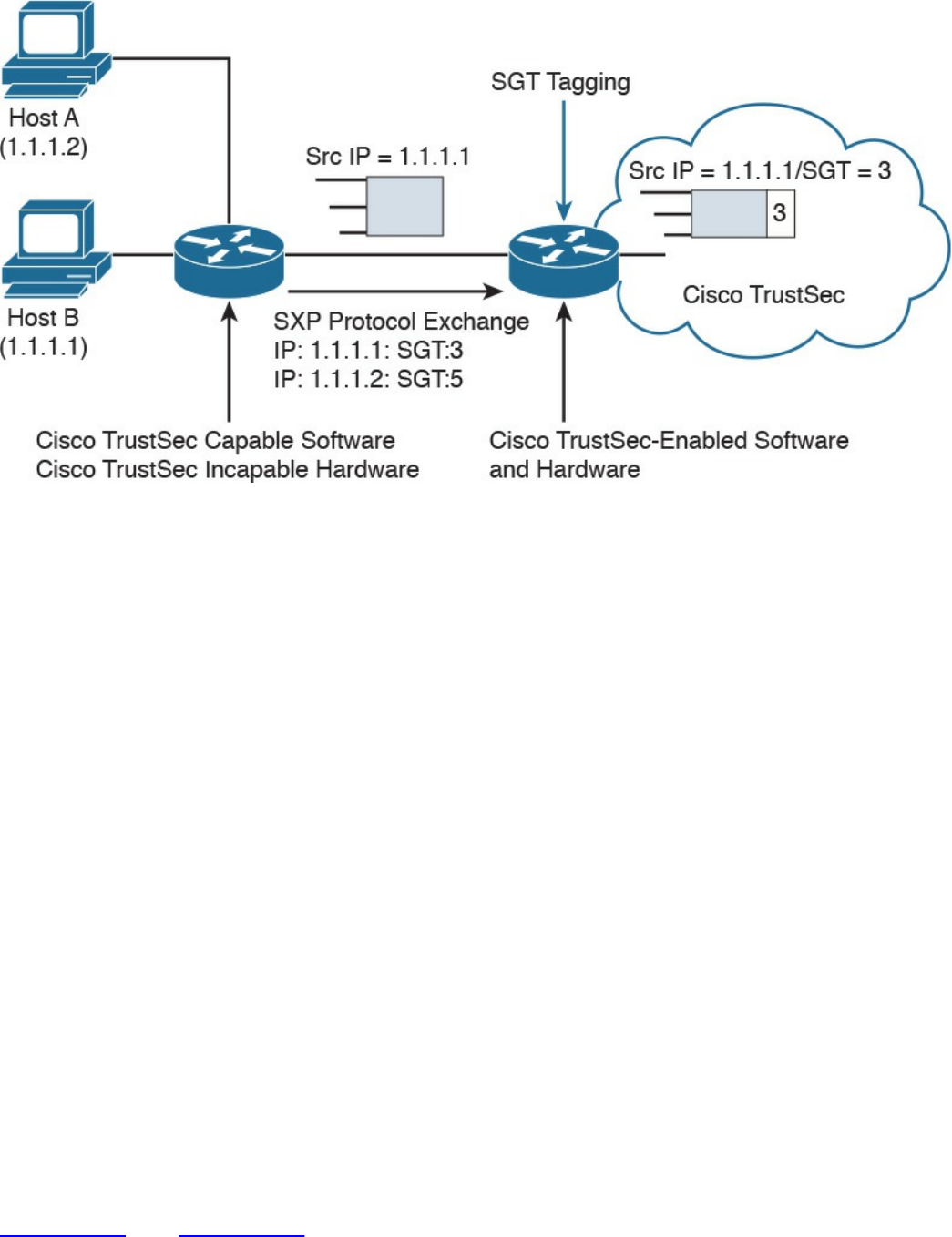

Cisco TrustSec

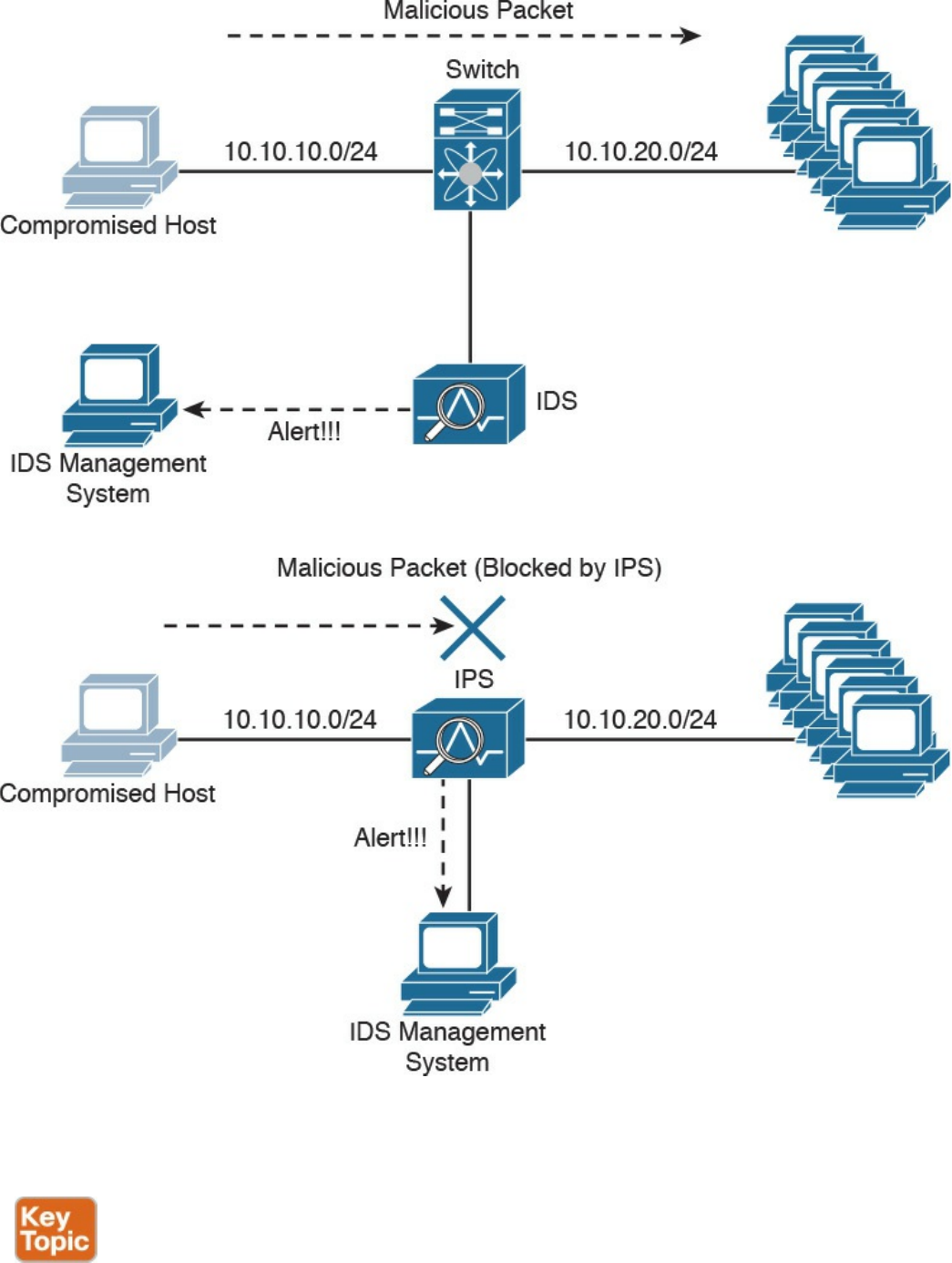

Intrusion Detection and Prevention

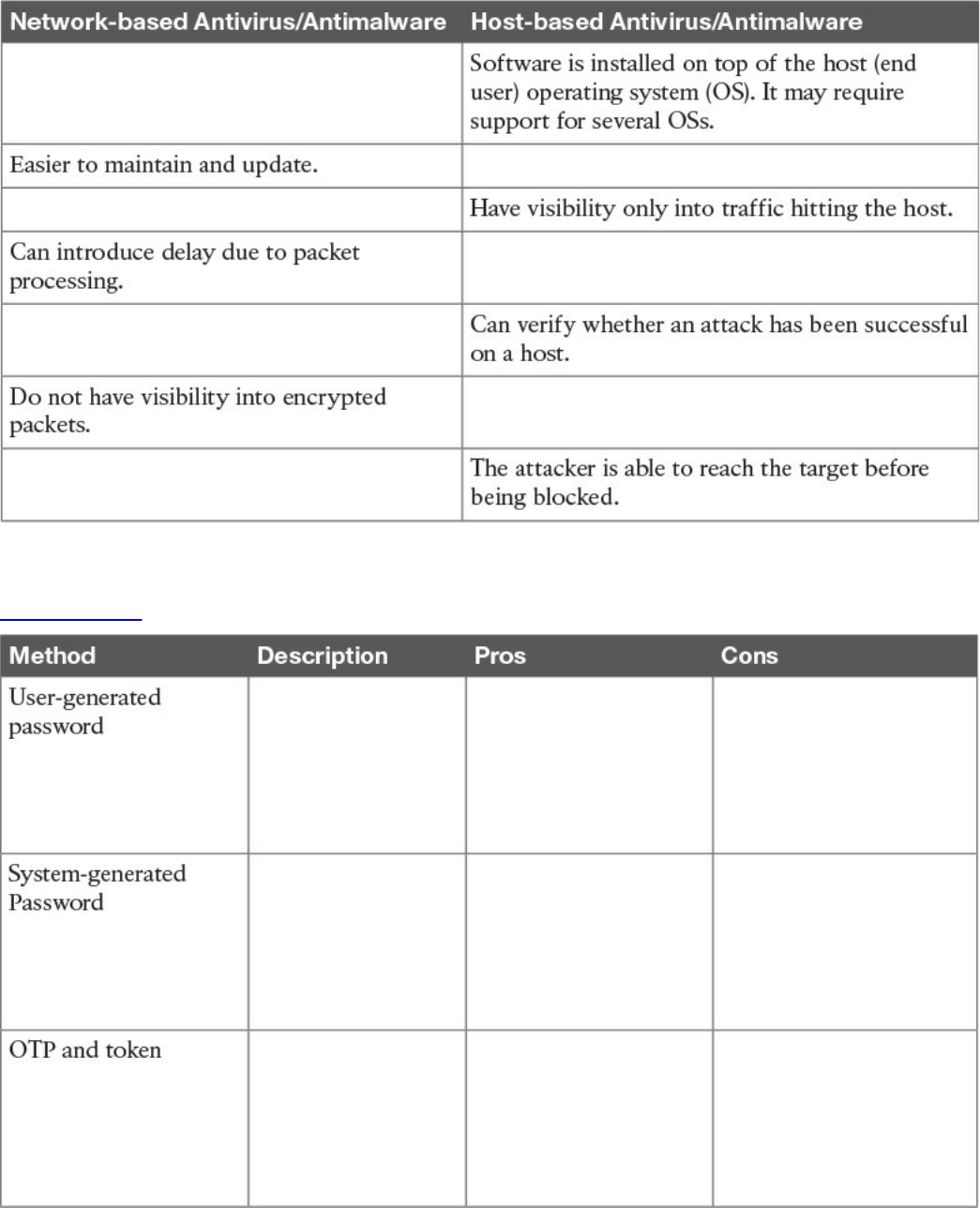

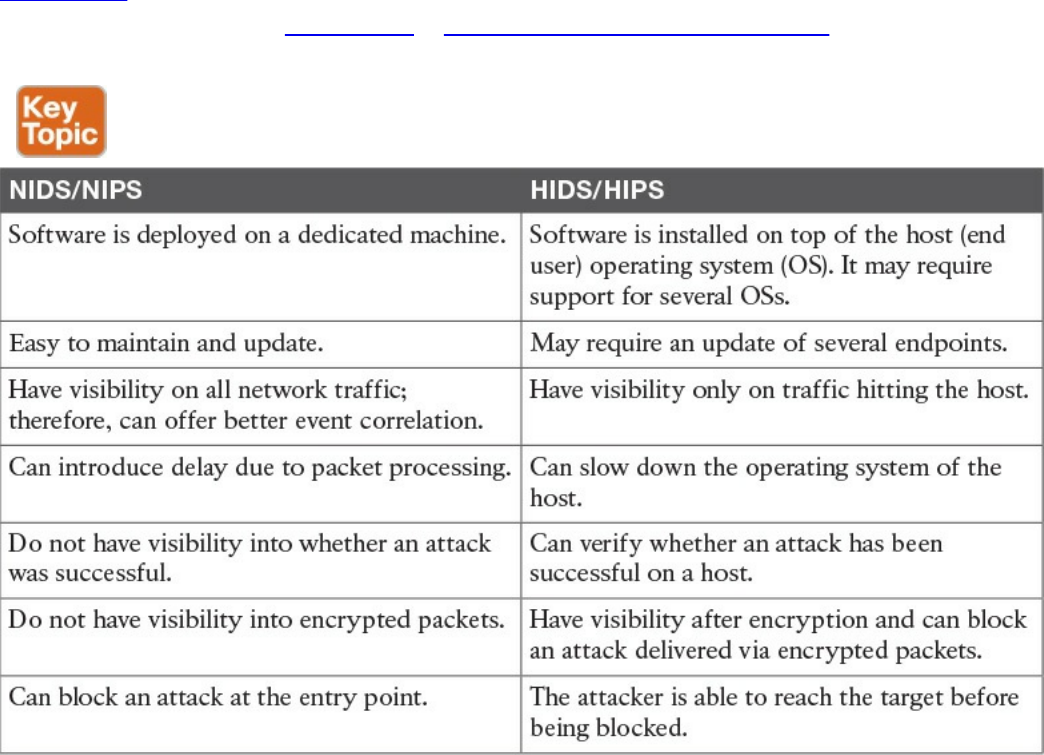

Network-Based Intrusion Detection and Protection System

Host-Based Intrusion Detection and Prevention

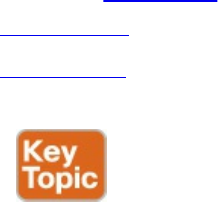

Antivirus and Antimalware

Exam Preparation Tasks

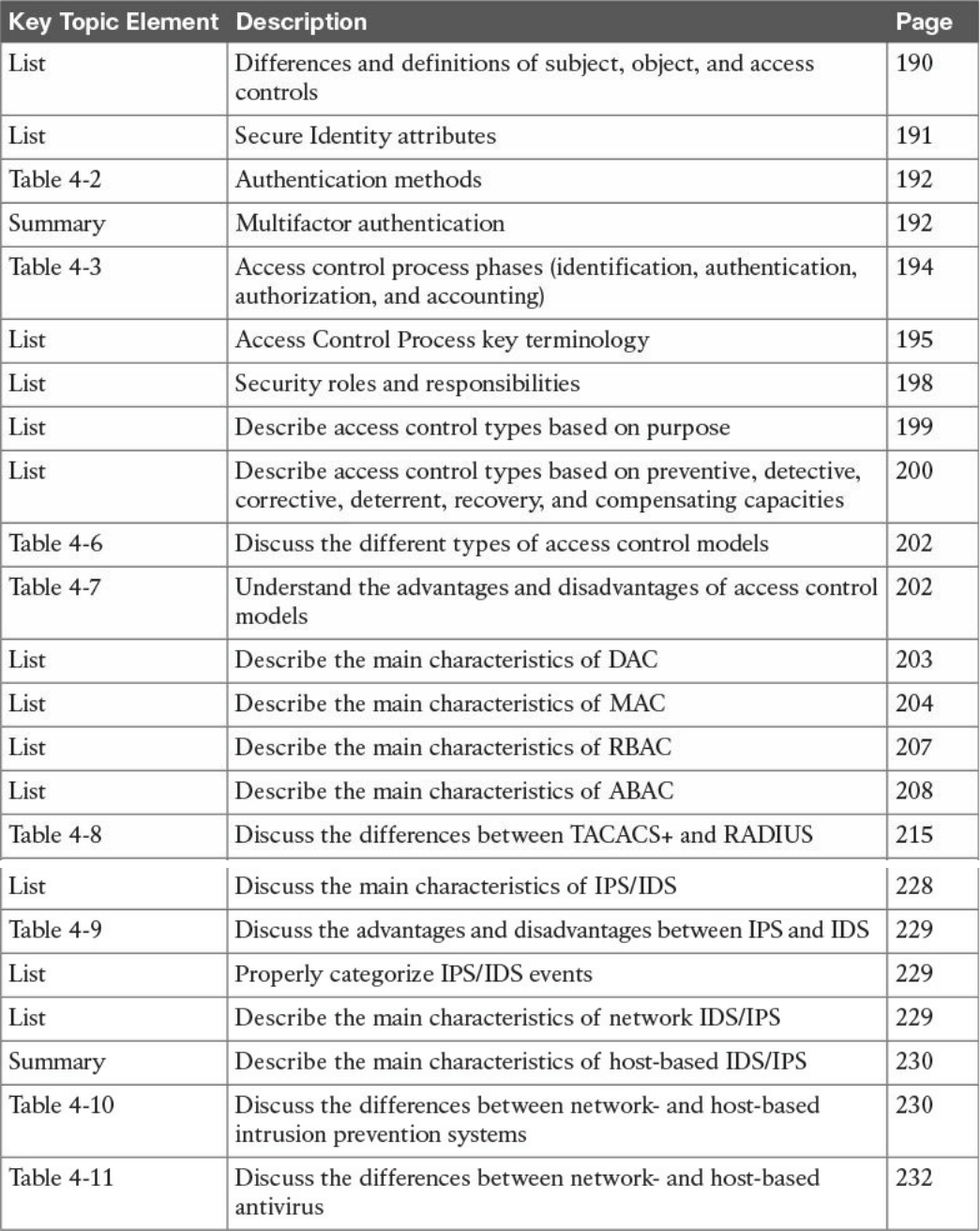

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

References and Additional Reading

Chapter 5 Introduction to Security Operations Management

“Do I Know This Already?” Quiz

Foundation Topics

Introduction to Identity and Access Management

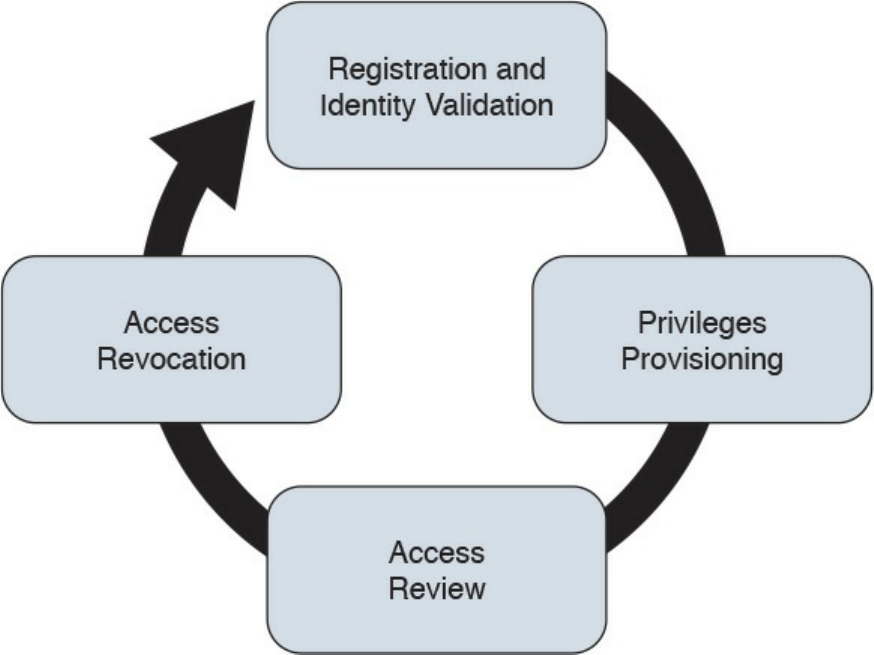

Phases of the Identity and Access Lifecycle

Registration and Identity Validation

Privileges Provisioning

Access Review

Access Revocation

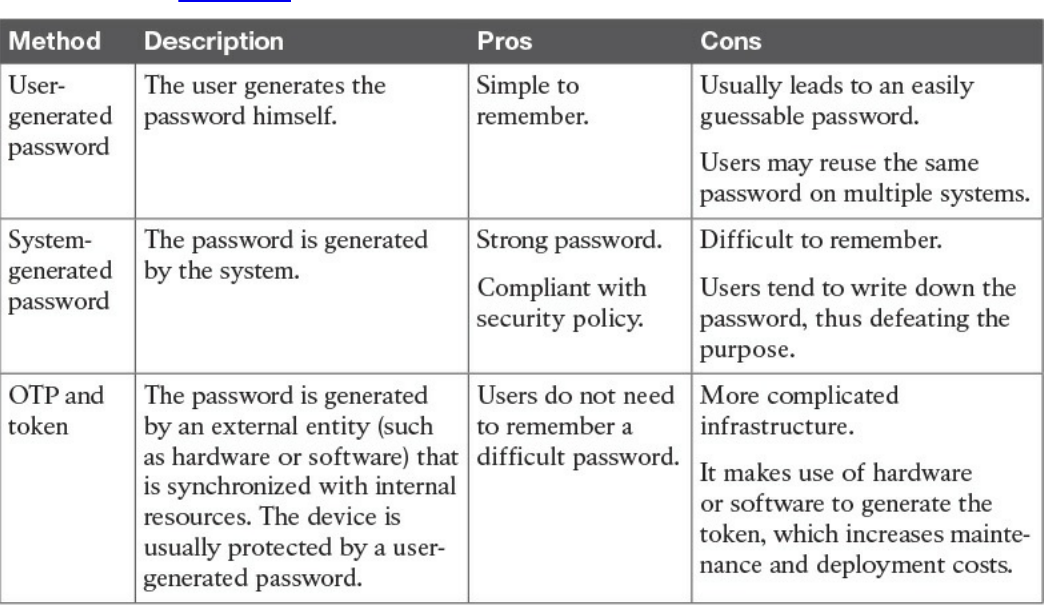

Password Management

Password Creation

Password Storage and Transmission

Password Reset

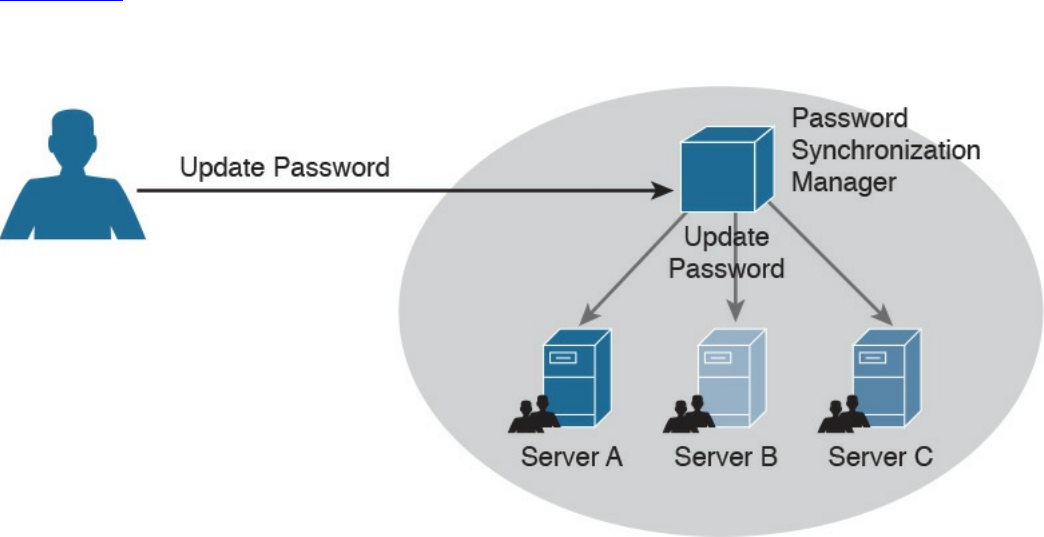

Password Synchronization

Directory Management

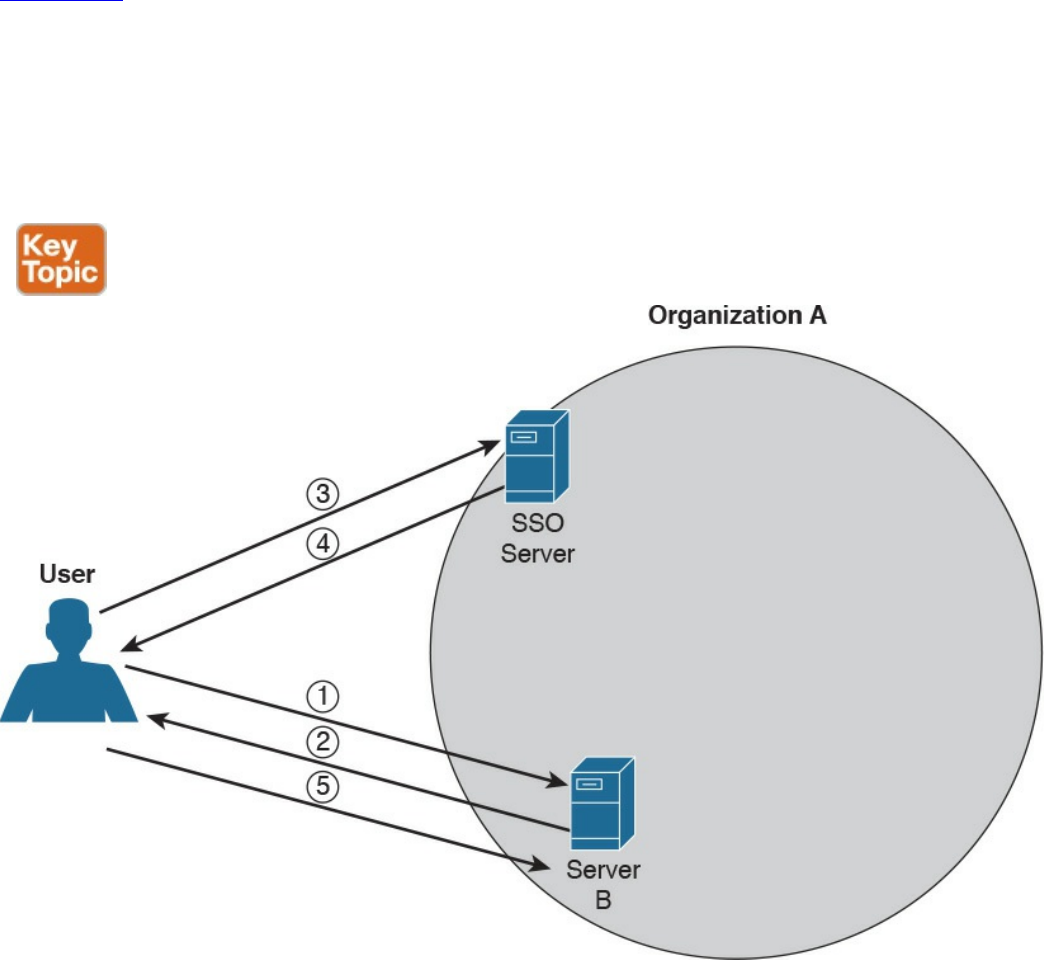

Single Sign-On

www.hellodigi.ir

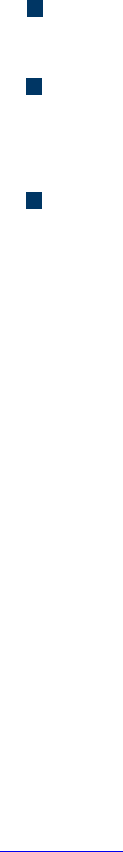

Kerberos

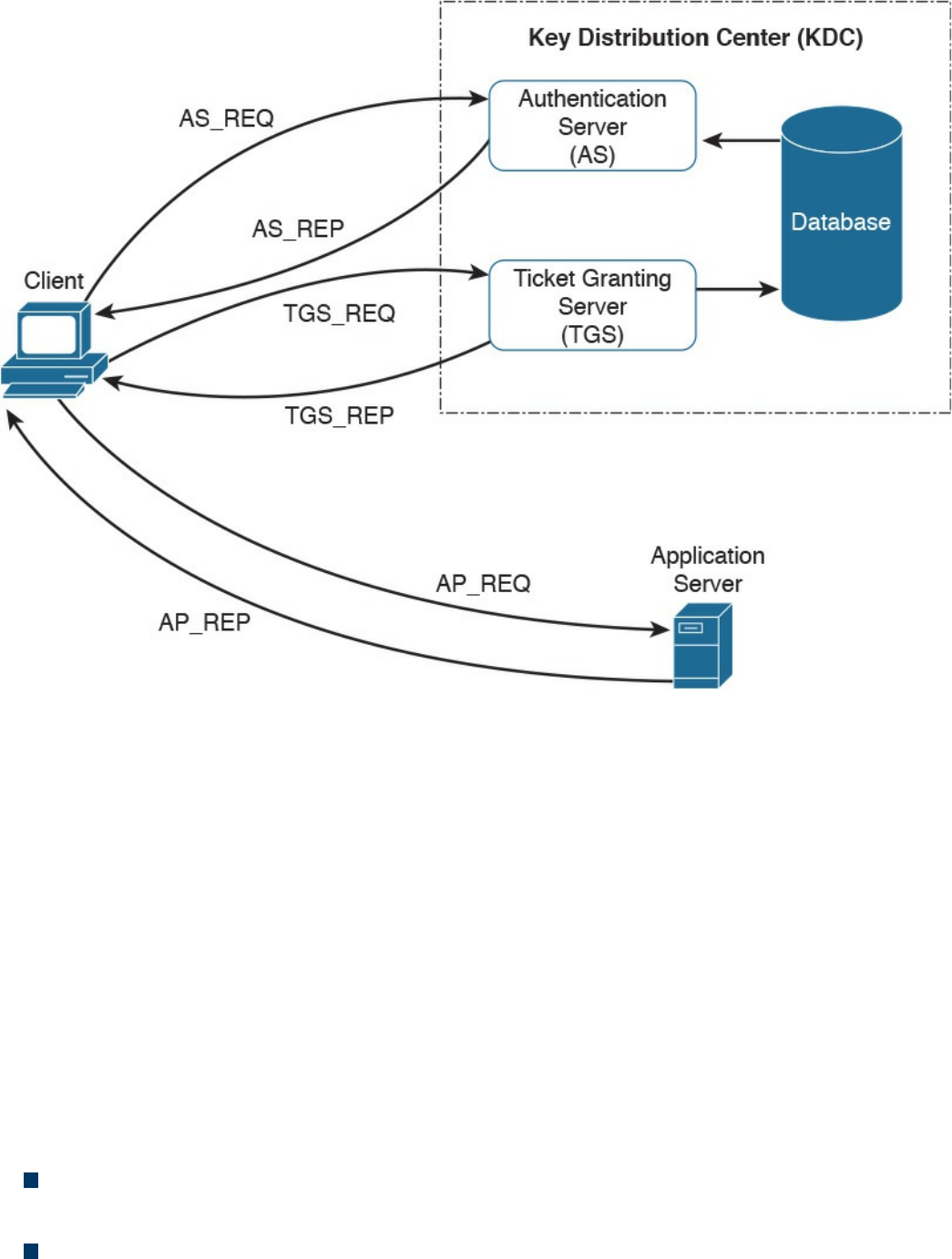

Federated SSO

Security Assertion Markup Language

OAuth

OpenID Connect

Security Events and Logs Management

Logs Collection, Analysis, and Disposal

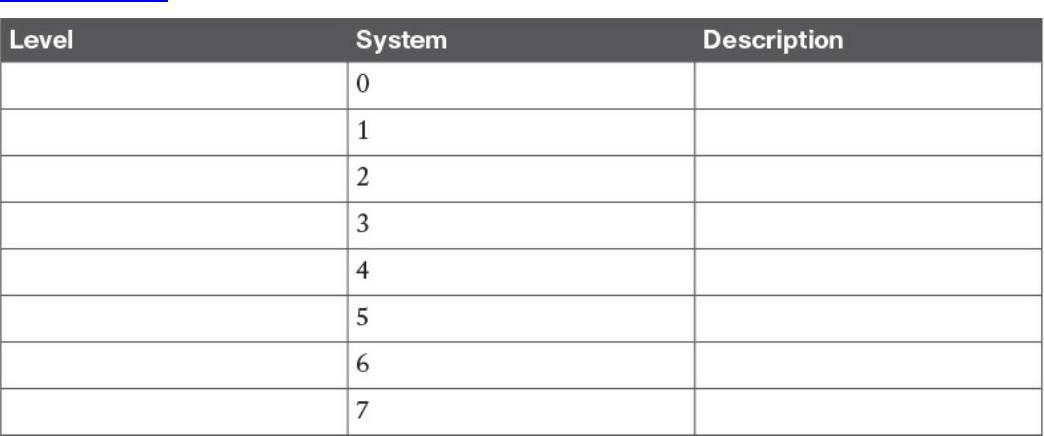

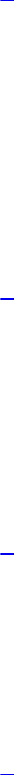

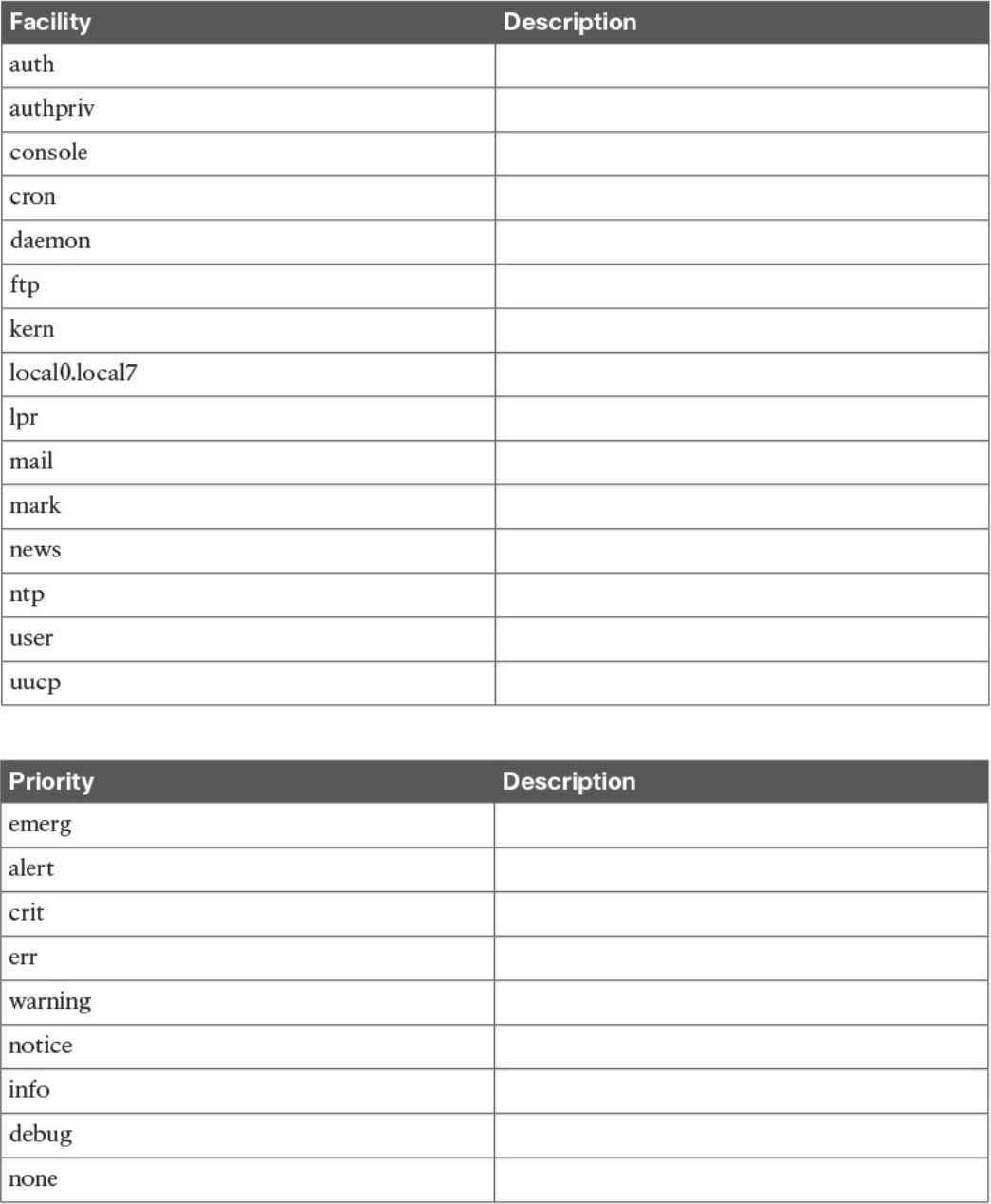

Syslog

Security Information and Event Manager

Assets Management

Assets Inventory

Assets Ownership

Assets Acceptable Use and Return Policies

Assets Classification

Assets Labeling

Assets and Information Handling

Media Management

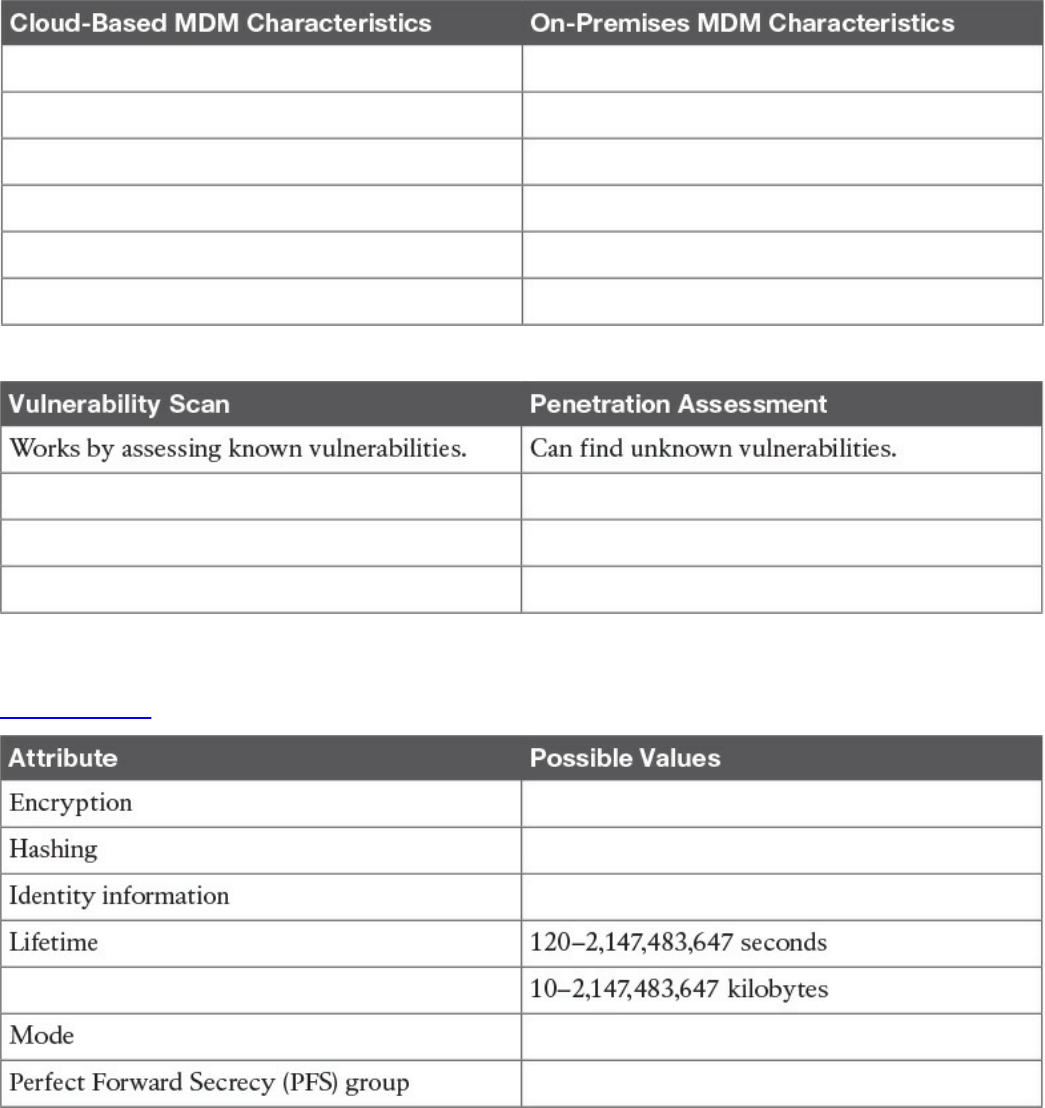

Introduction to Enterprise Mobility Management

Mobile Device Management

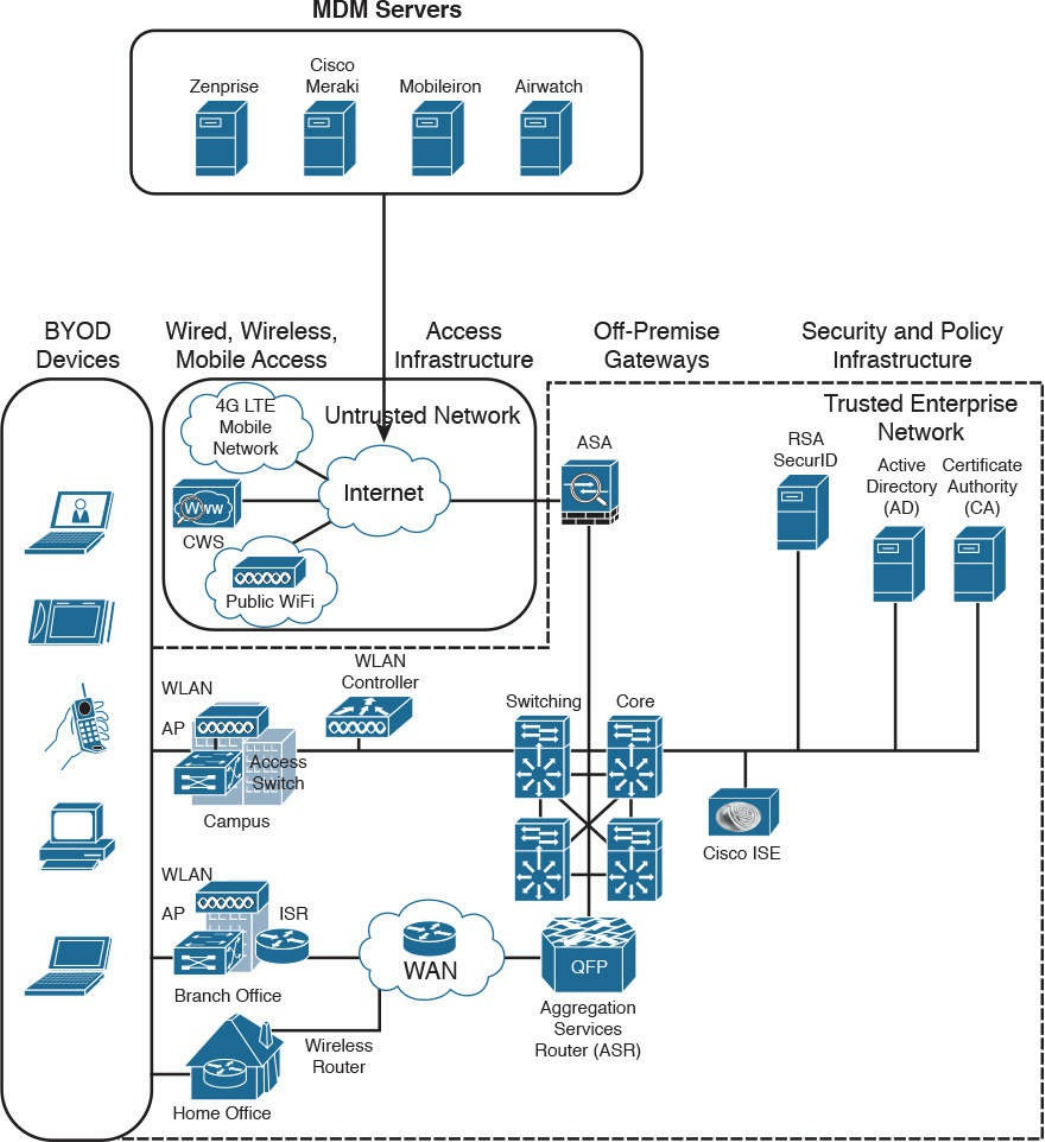

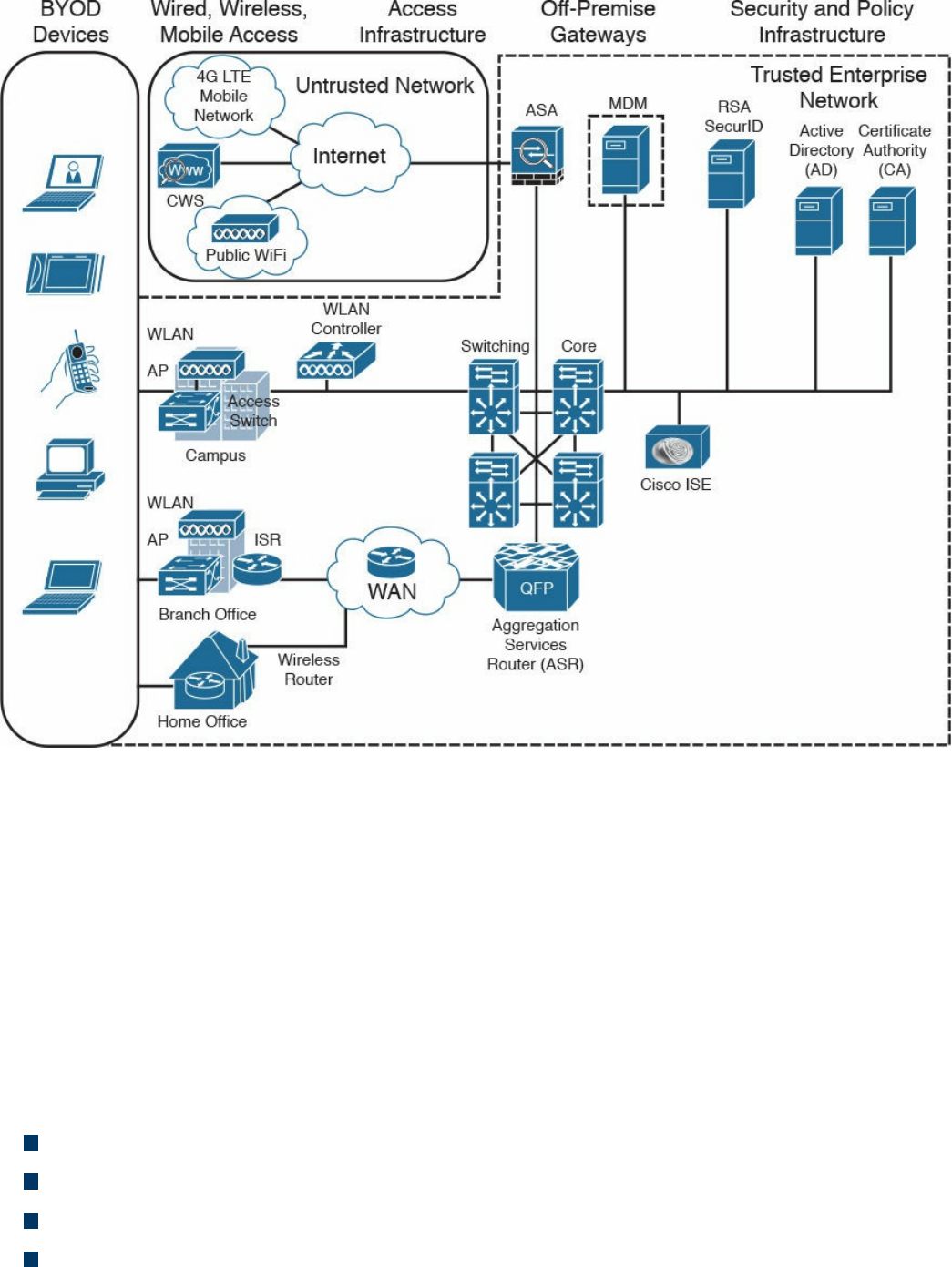

Cisco BYOD Architecture

Cisco ISE and MDM Integration

Cisco Meraki Enterprise Mobility Management

Configuration and Change Management

Configuration Management

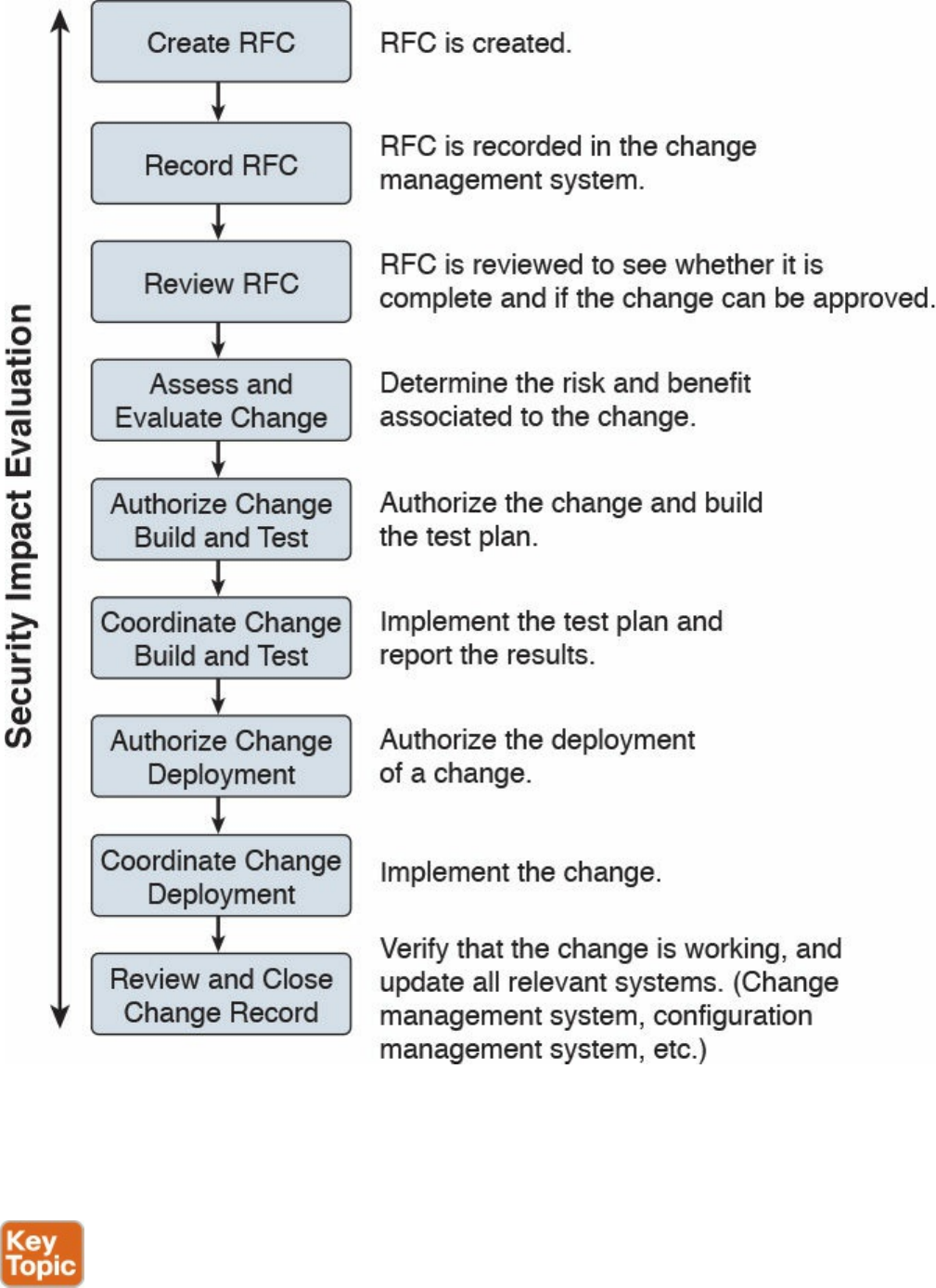

Change Management

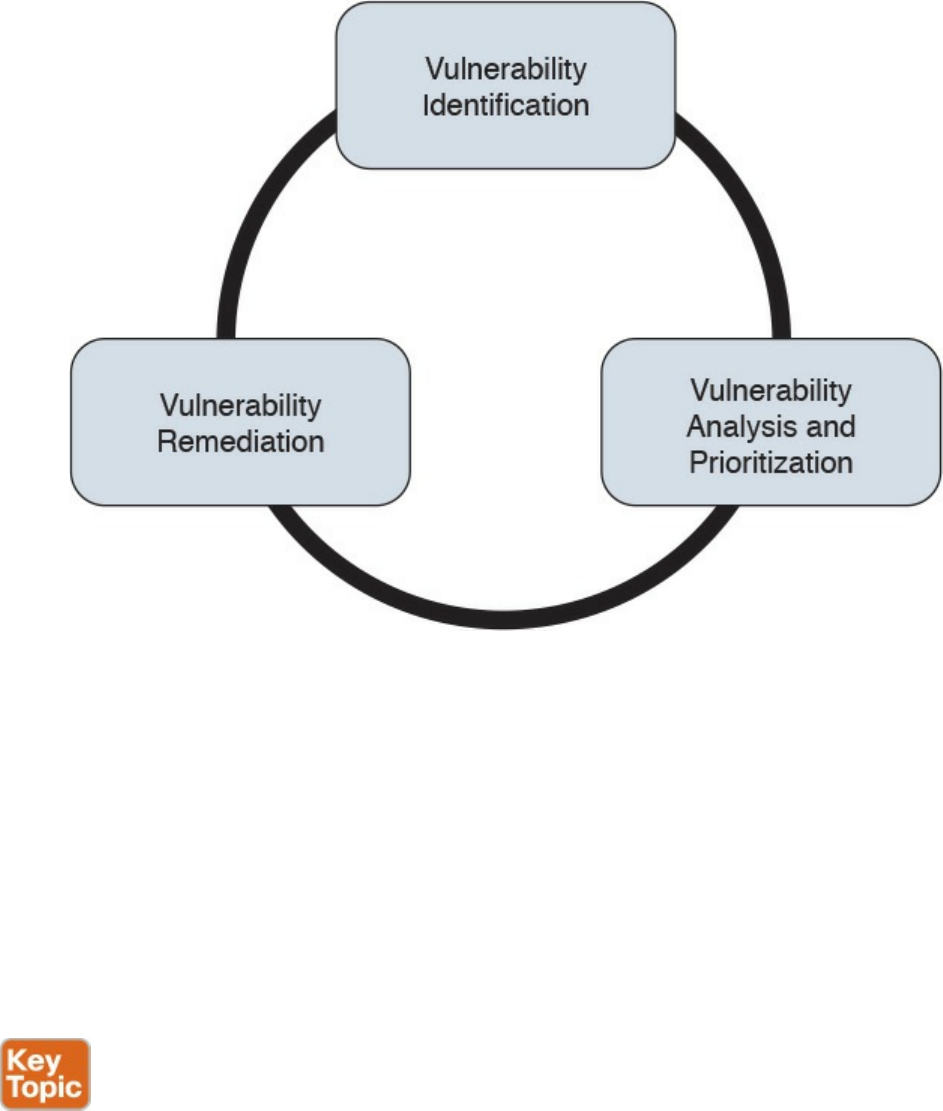

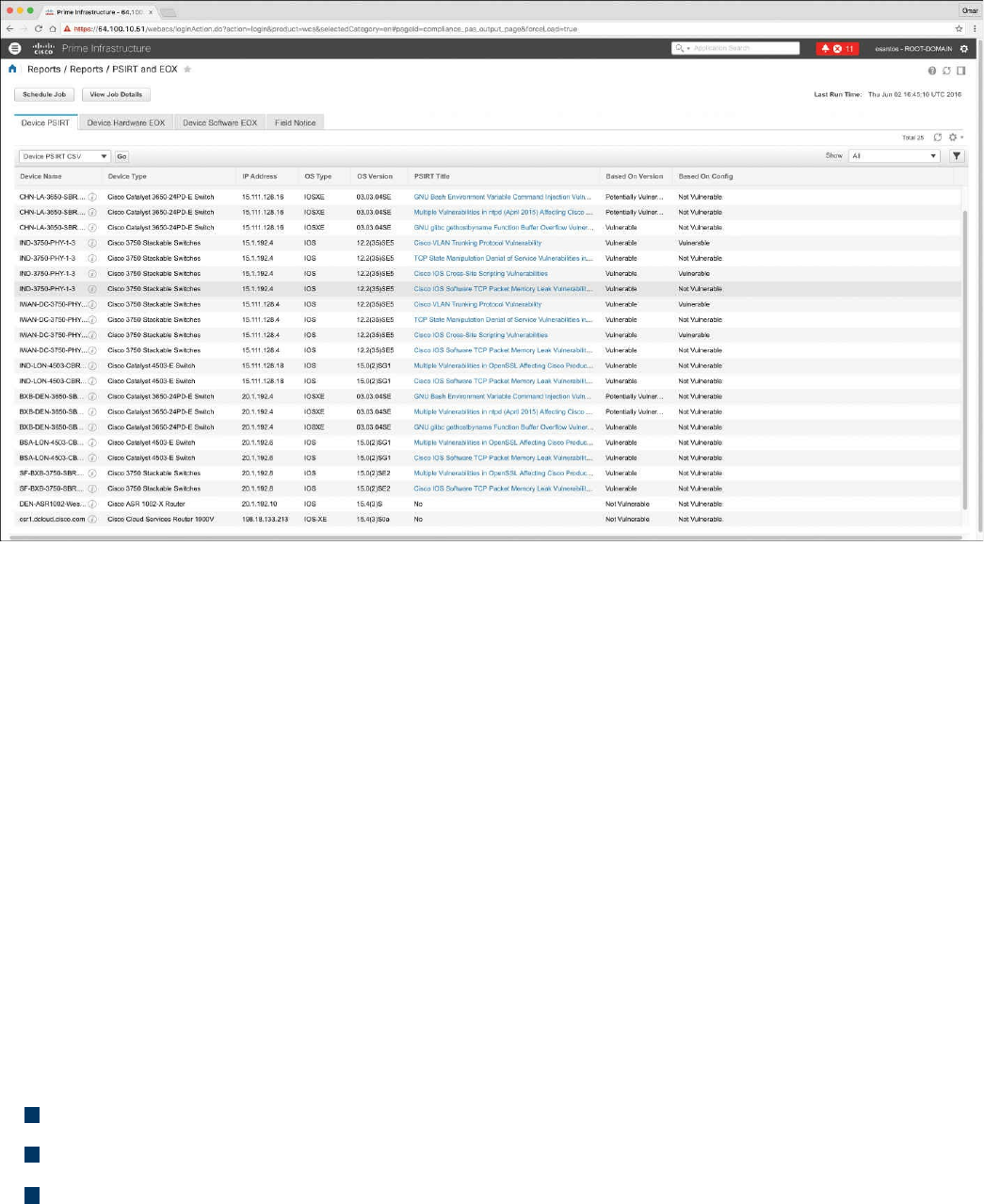

Vulnerability Management

Vulnerability Identification

Finding Information about a Vulnerability

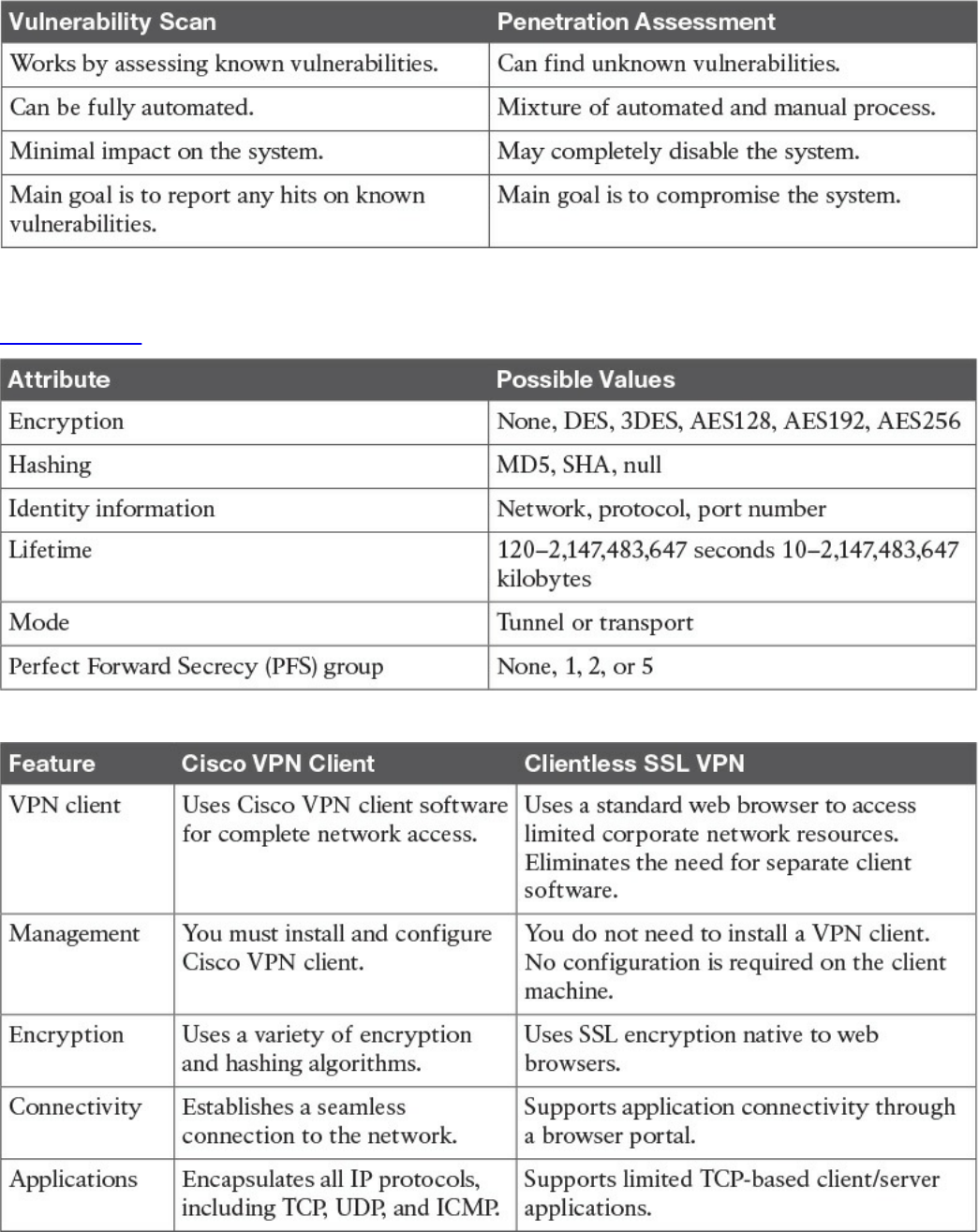

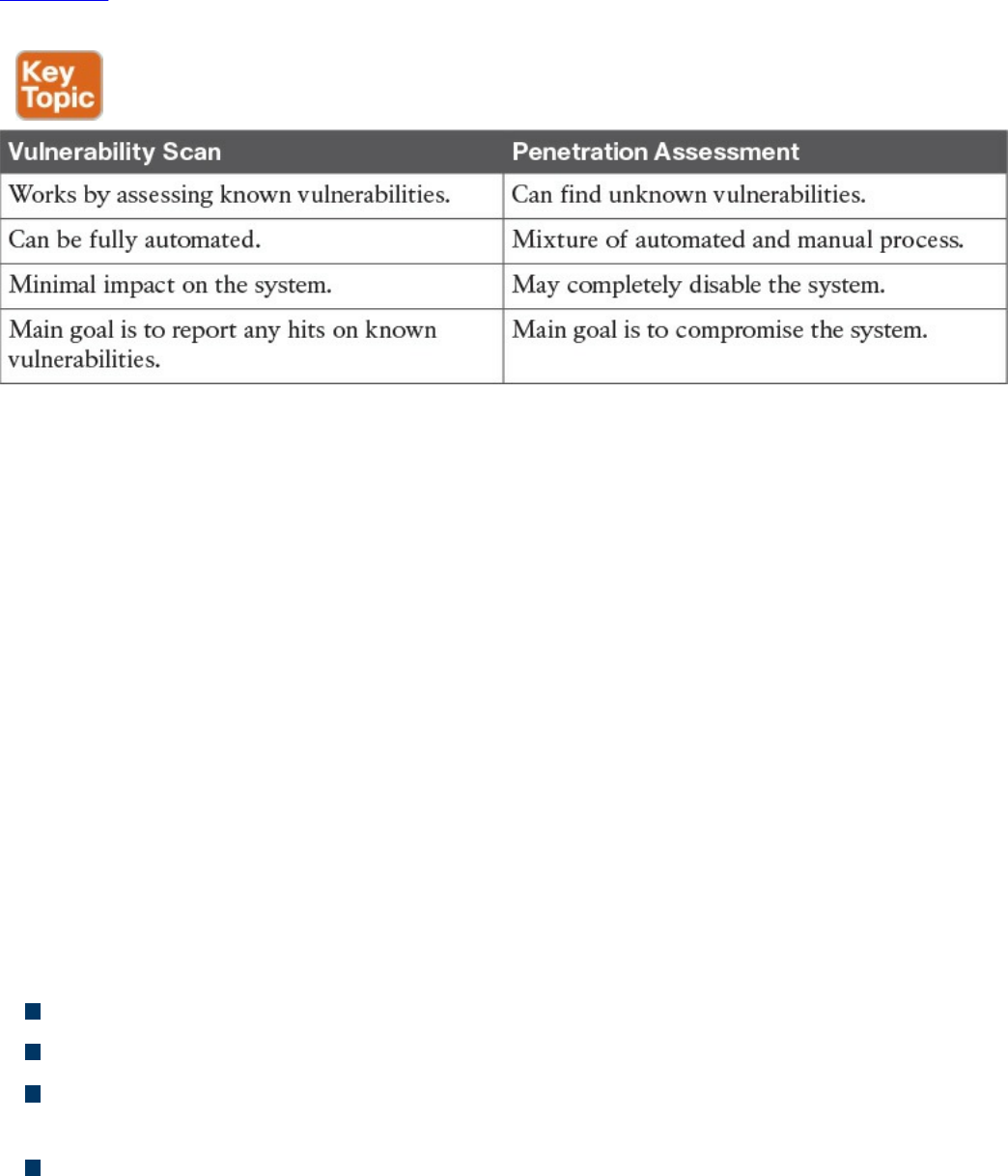

Vulnerability Scan

Penetration Assessment

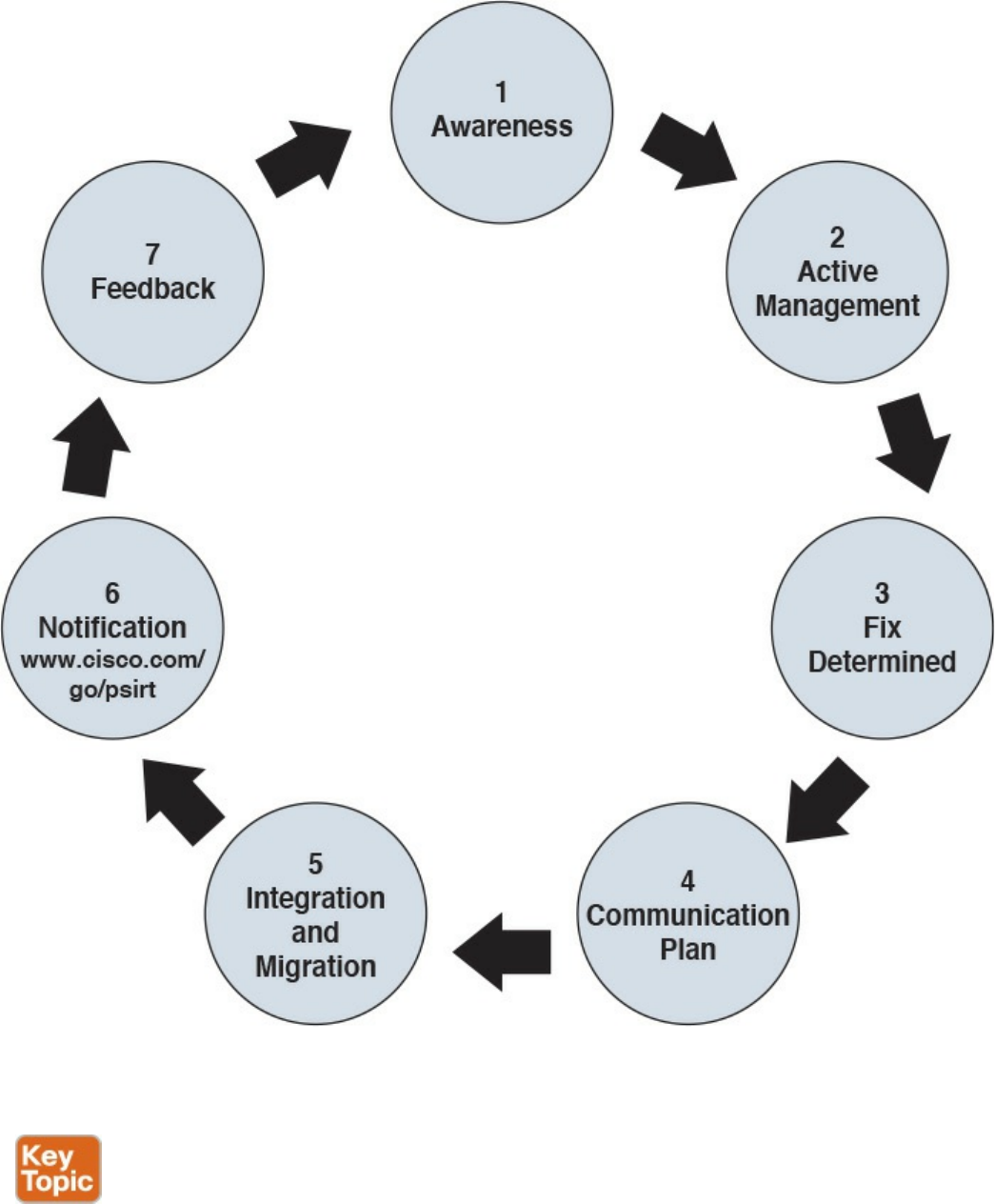

Product Vulnerability Management

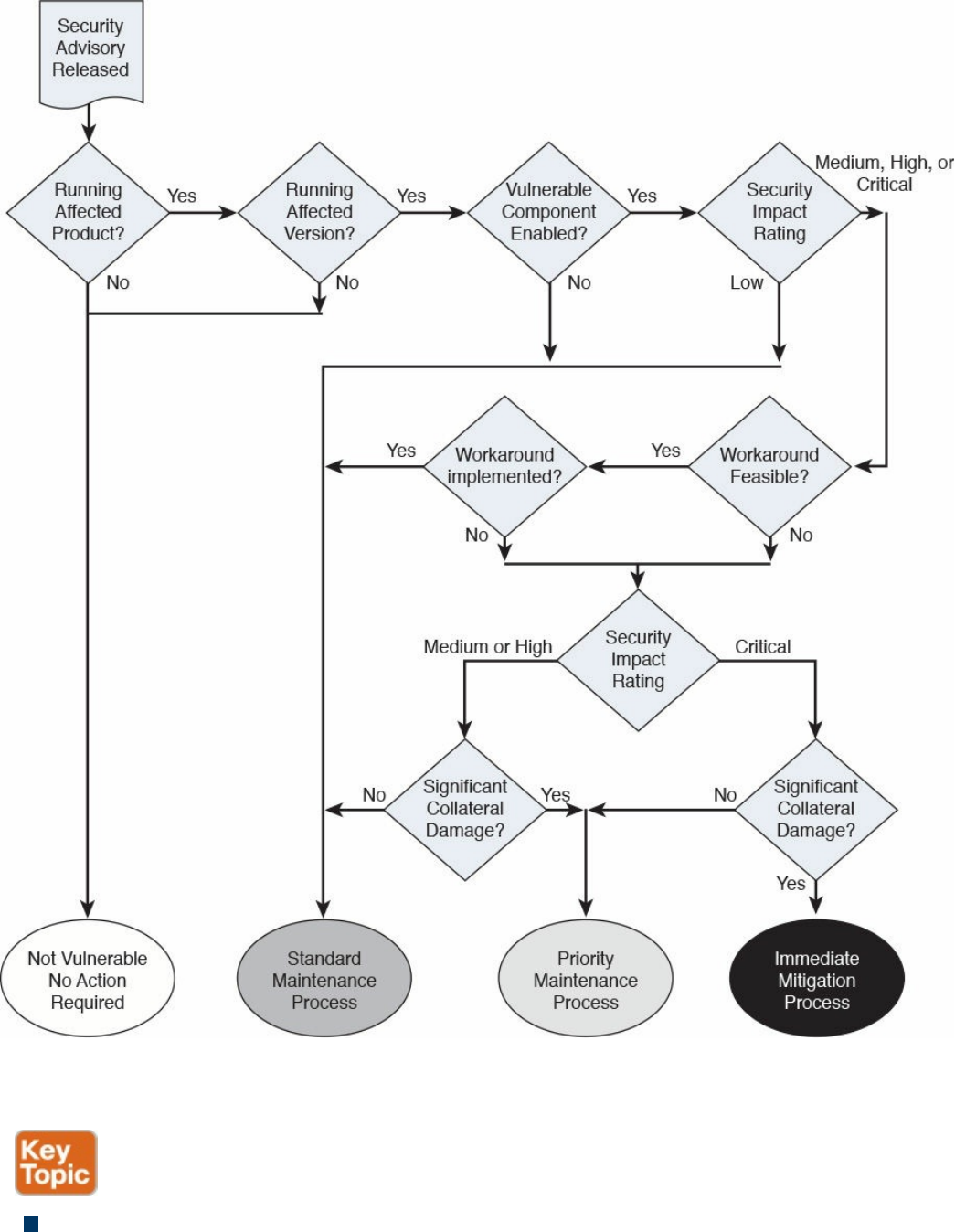

Vulnerability Analysis and Prioritization

www.hellodigi.ir

Vulnerability Remediation

Patch Management

References and Additional Readings

Exam Preparation Tasks

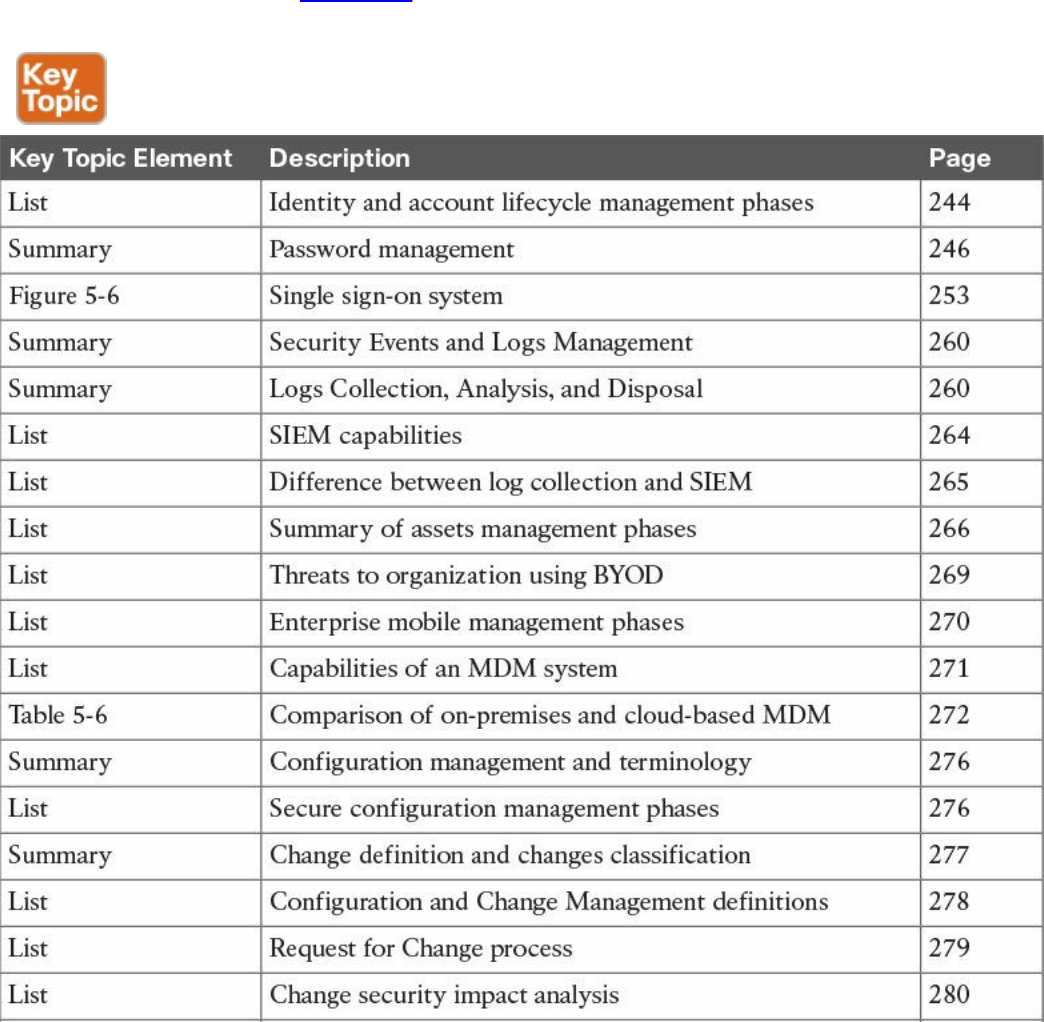

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

Part III Cryptography

Chapter 6 Fundamentals of Cryptography and Public Key Infrastructure (PKI)

“Do I Know This Already?” Quiz

Foundation Topics

Cryptography

Ciphers and Keys

Ciphers

Keys

Block and Stream Ciphers

Symmetric and Asymmetric Algorithms

Symmetric Algorithms

Asymmetric Algorithms

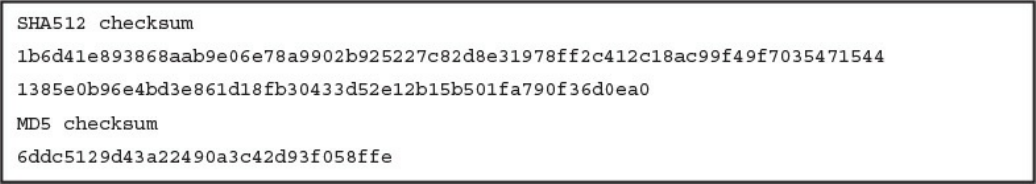

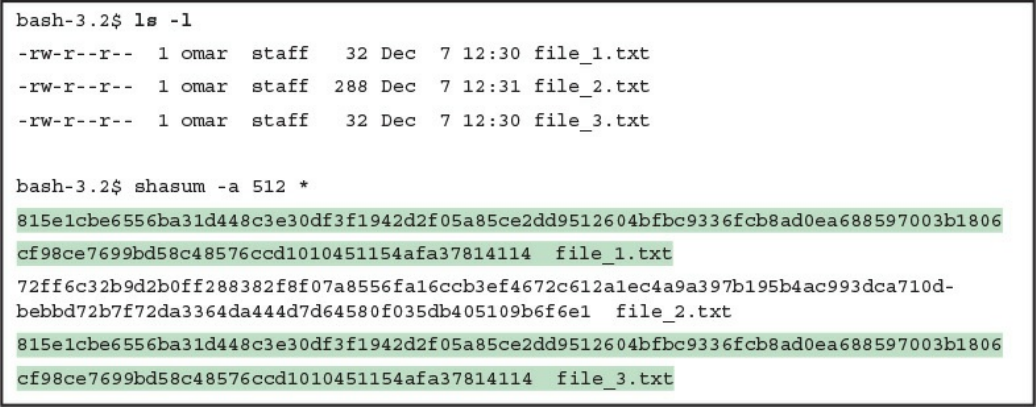

Hashes

Hashed Message Authentication Code

Digital Signatures

Digital Signatures in Action

Key Management

Next-Generation Encryption Protocols

IPsec and SSL

IPsec

SSL

Fundamentals of PKI

Public and Private Key Pairs

RSA Algorithm, the Keys, and Digital Certificates

www.hellodigi.ir

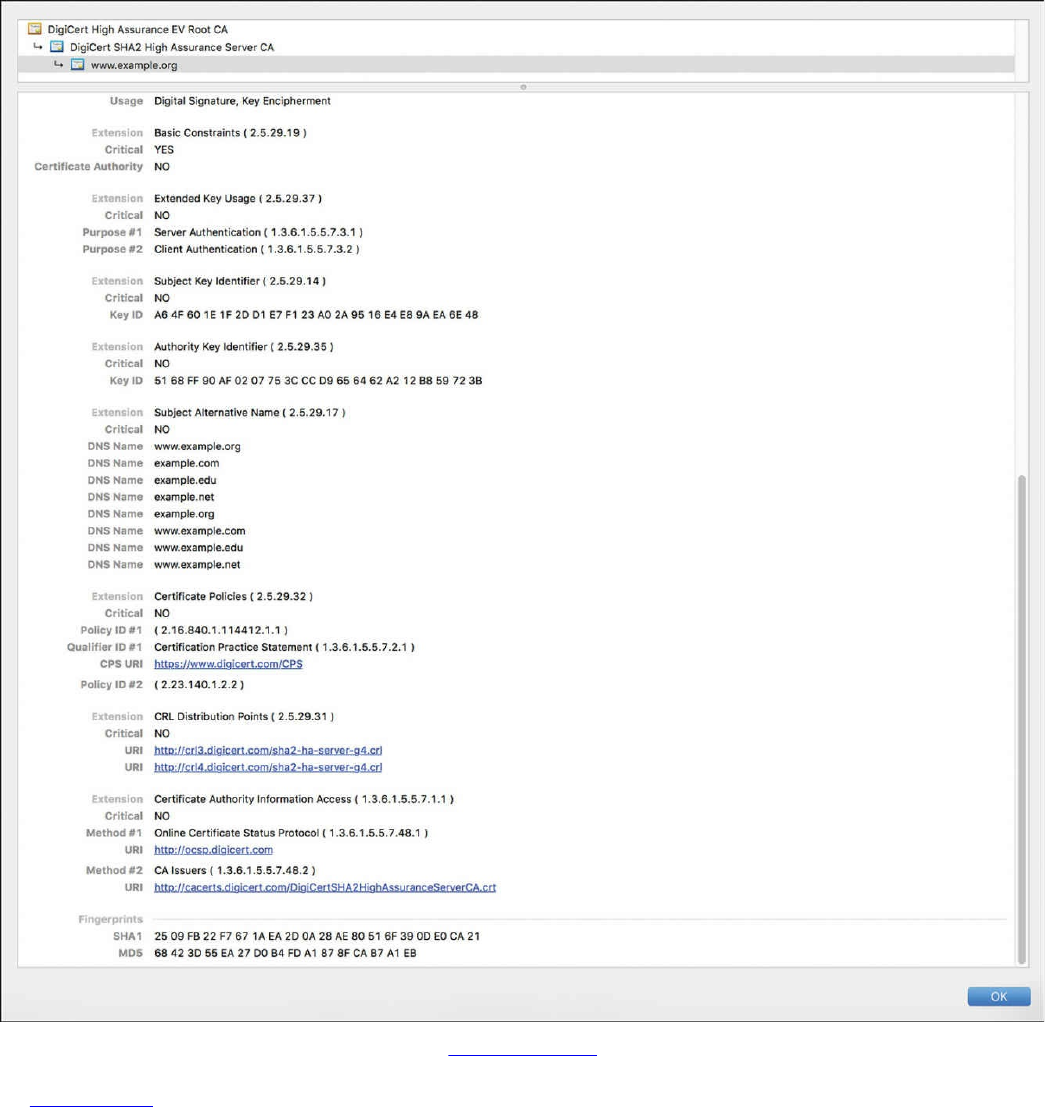

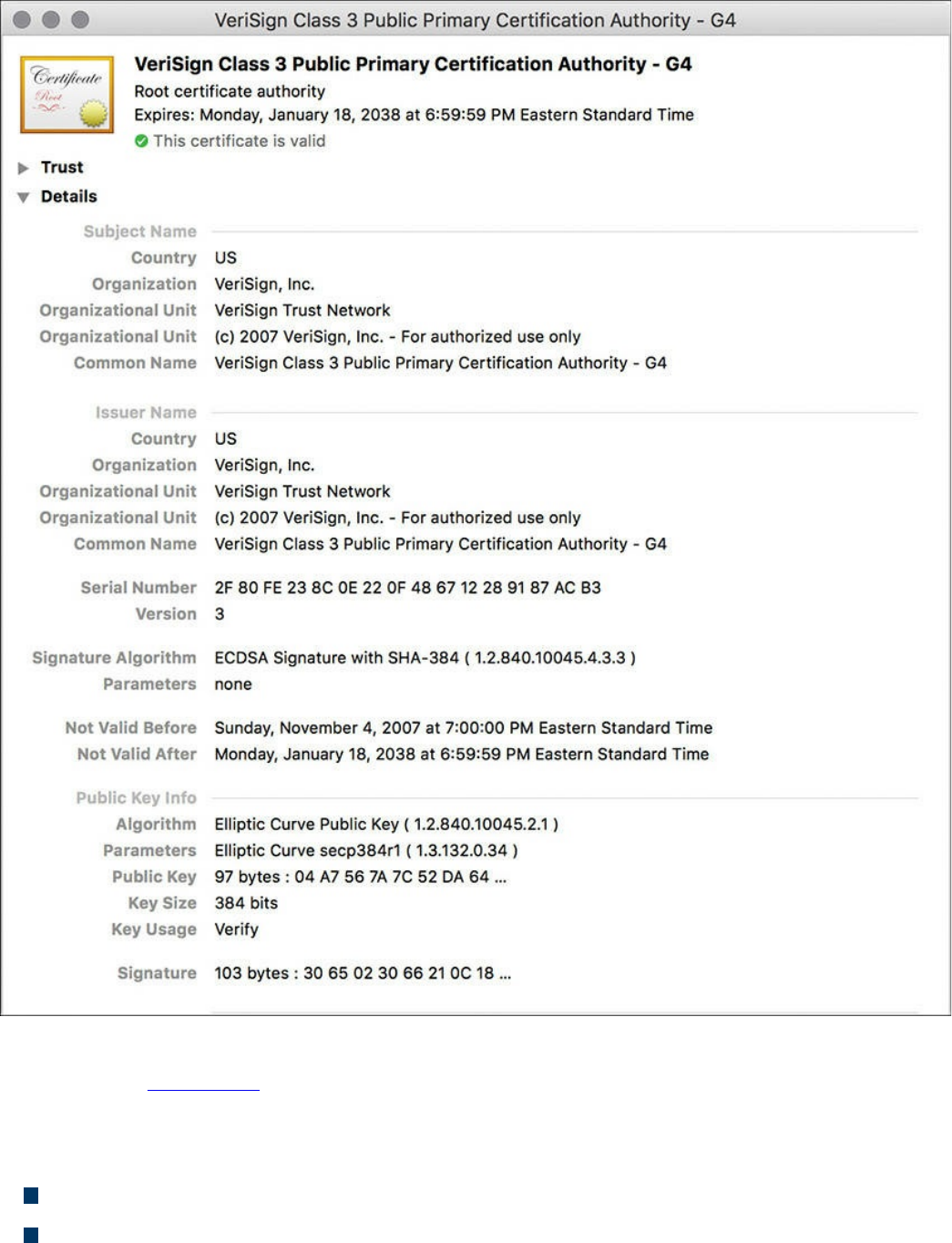

Certificate Authorities

Root and Identity Certificates

Root Certificate

Identity Certificate

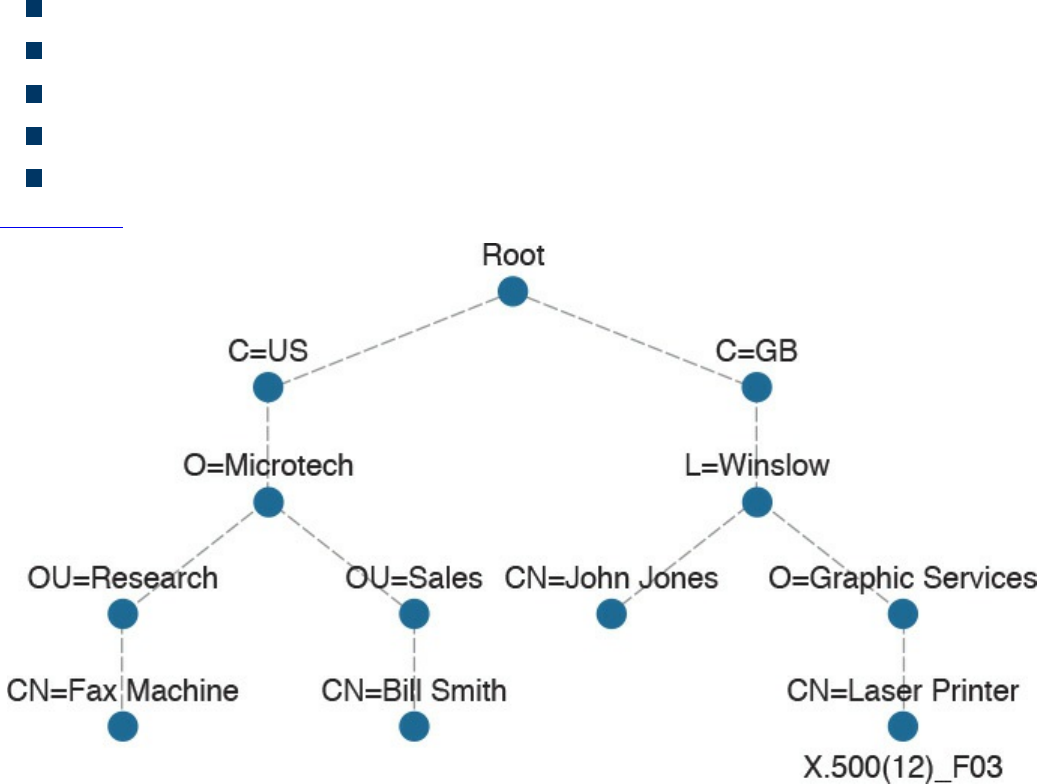

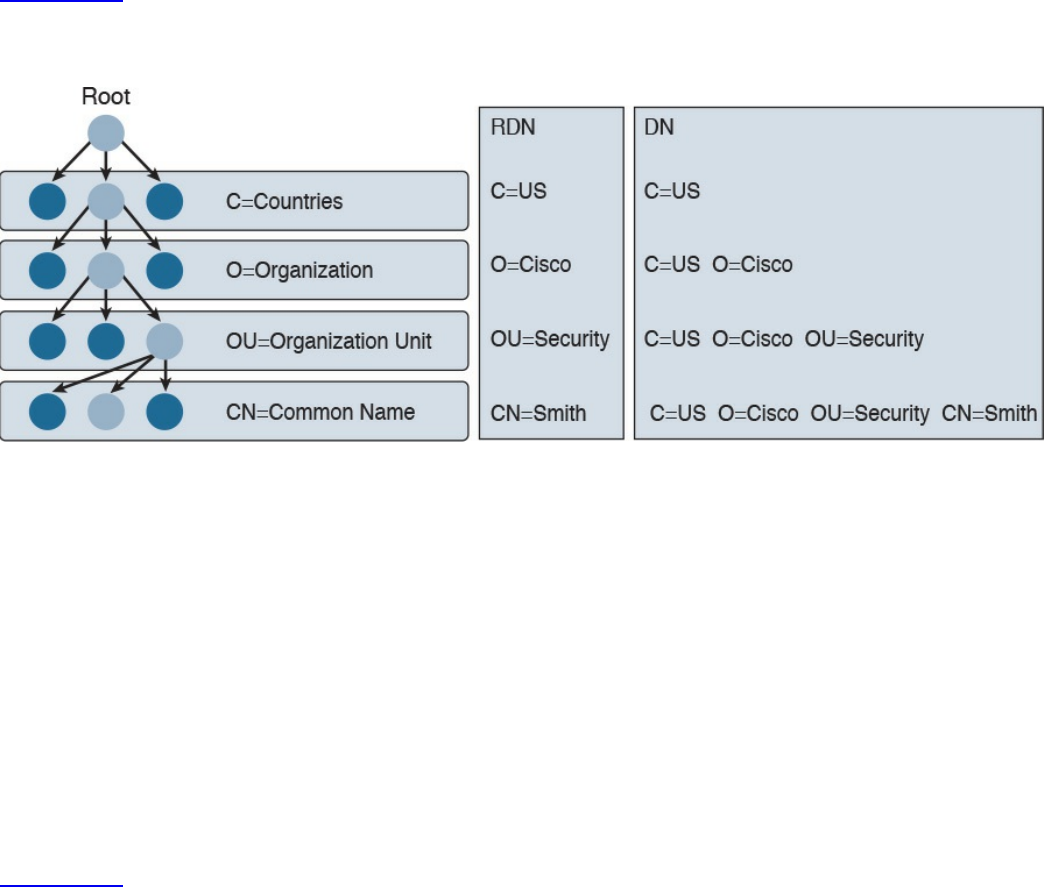

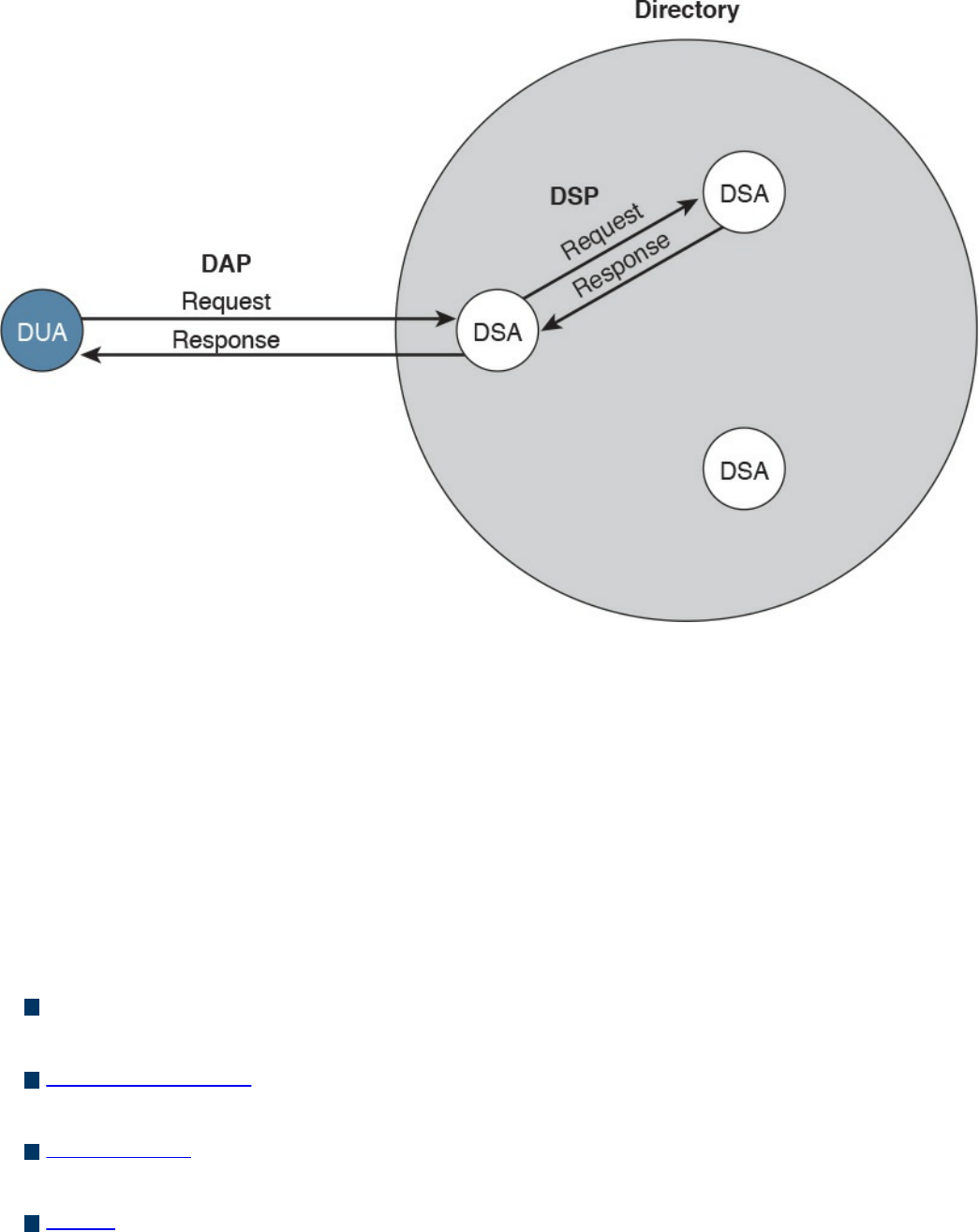

X.500 and X.509v3 Certificates

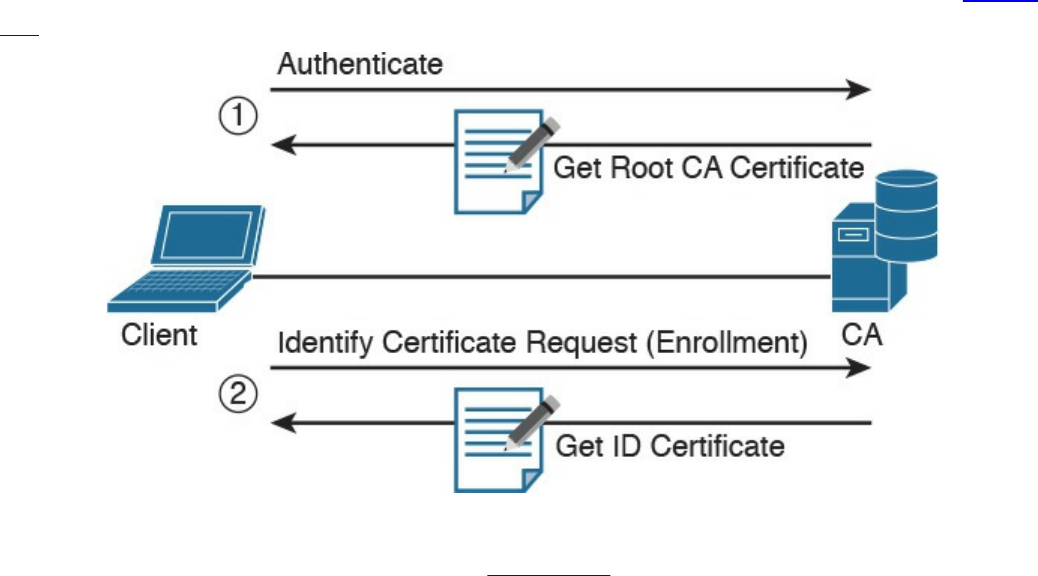

Authenticating and Enrolling with the CA

Public Key Cryptography Standards

Simple Certificate Enrollment Protocol

Revoking Digital Certificates

Using Digital Certificates

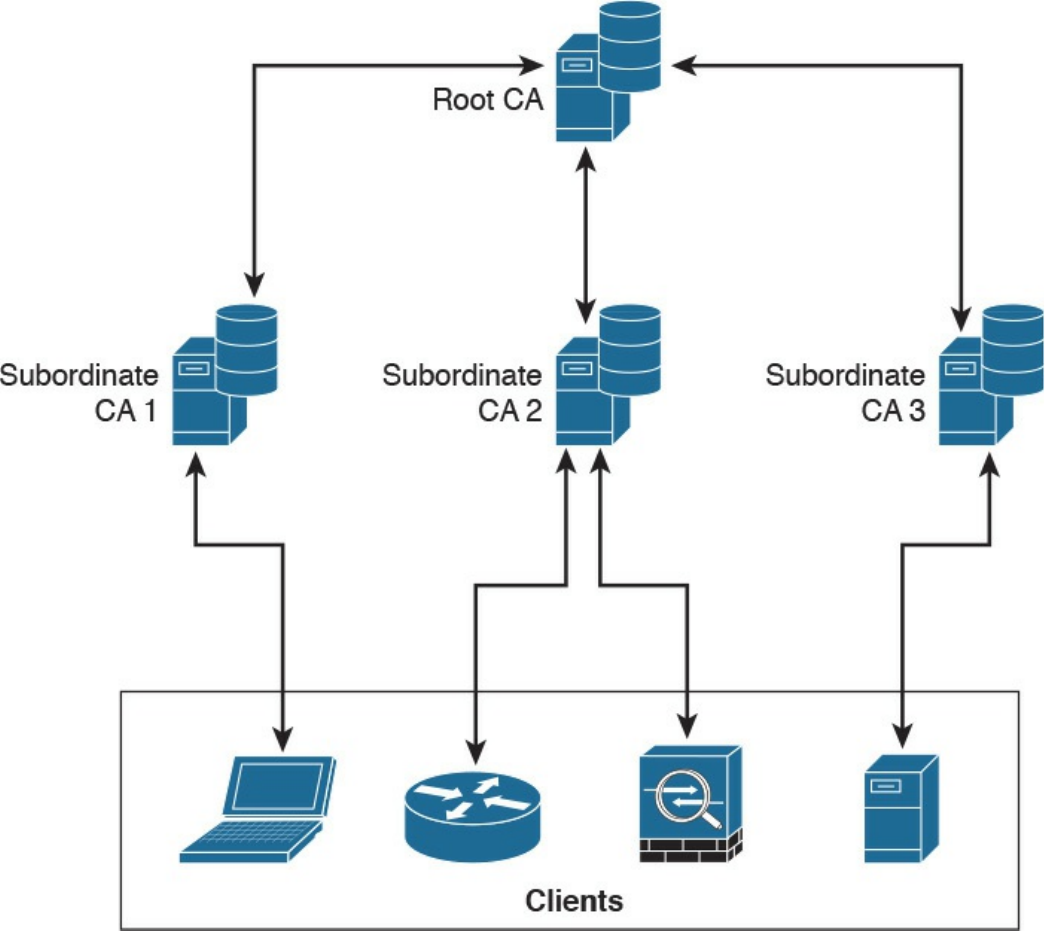

PKI Topologies

Single Root CA

Hierarchical CA with Subordinate CAs

Cross-certifying CAs

Exam Preparation Tasks

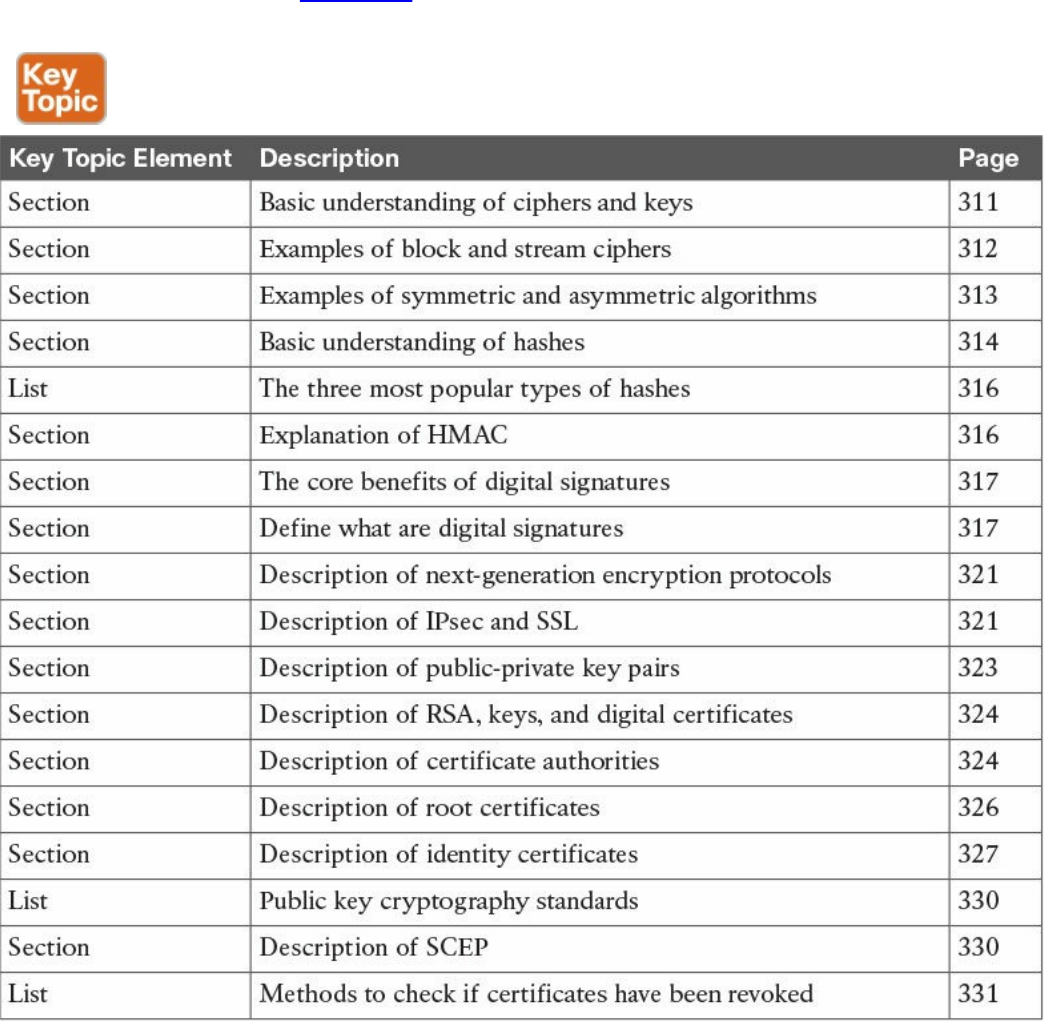

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

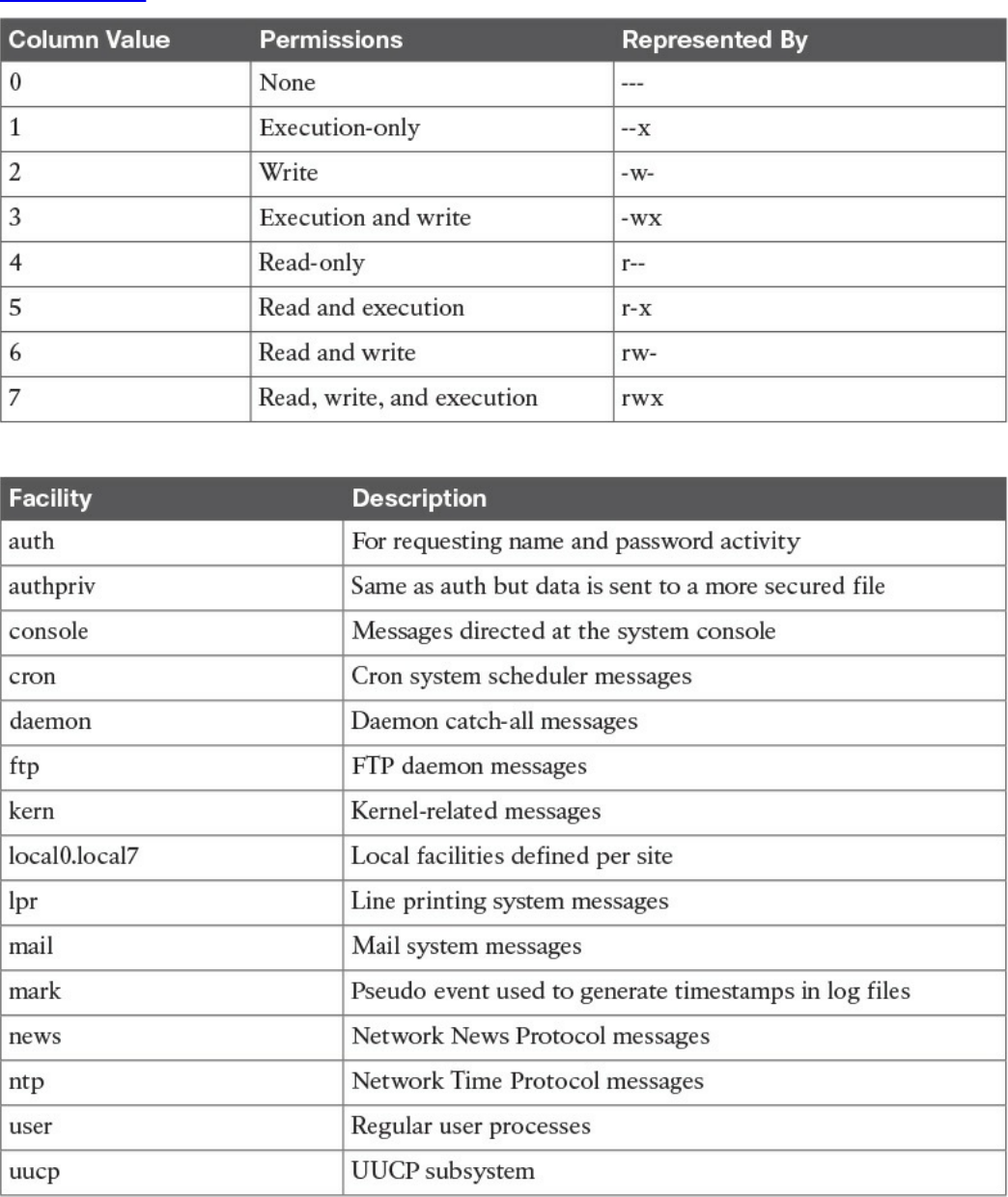

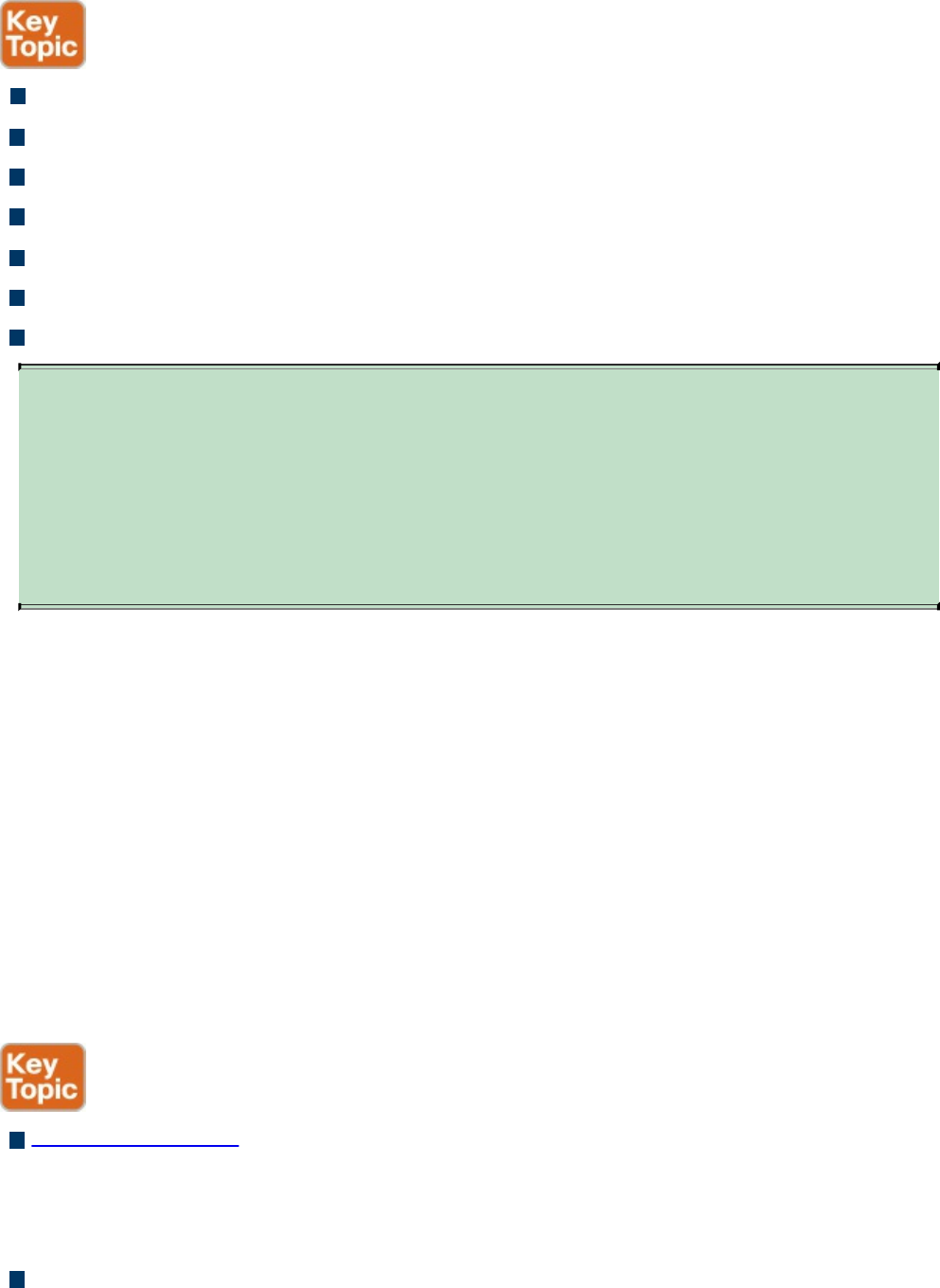

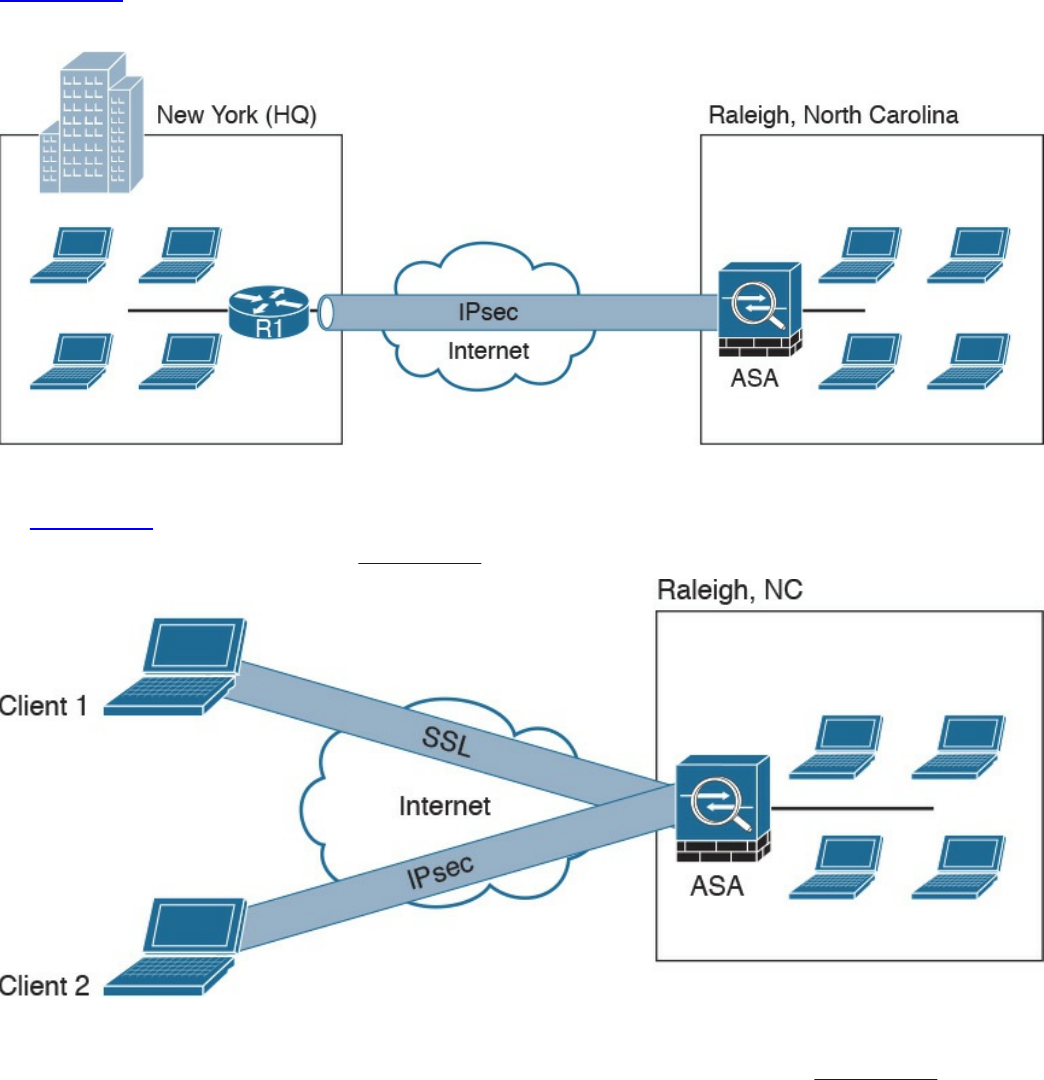

Chapter 7 Introduction to Virtual Private Networks (VPNs)

“Do I Know This Already?” Quiz

Foundation Topics

What Are VPNs?

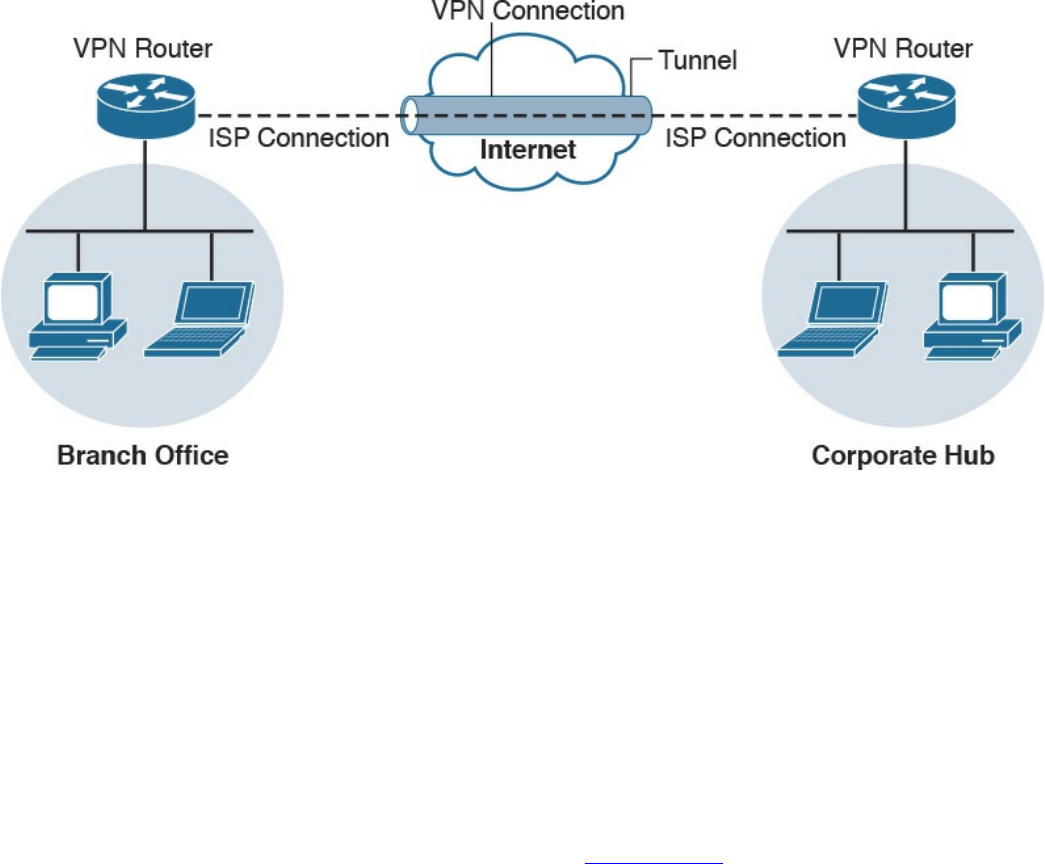

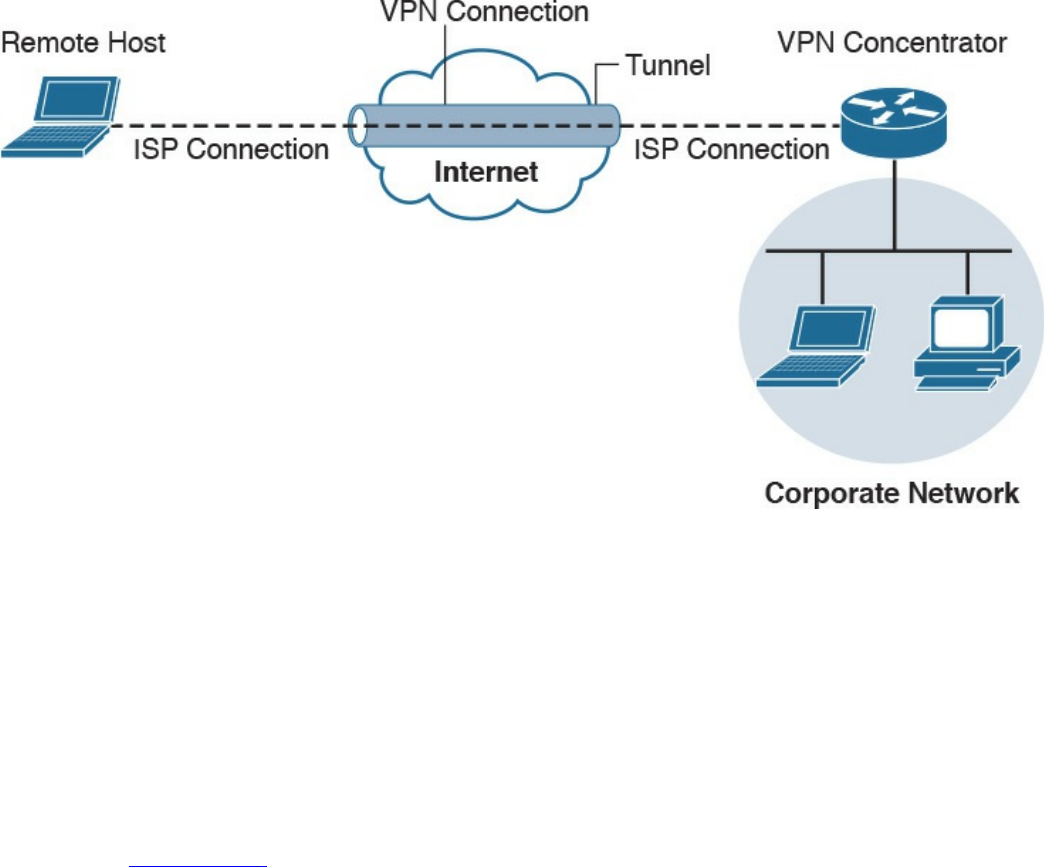

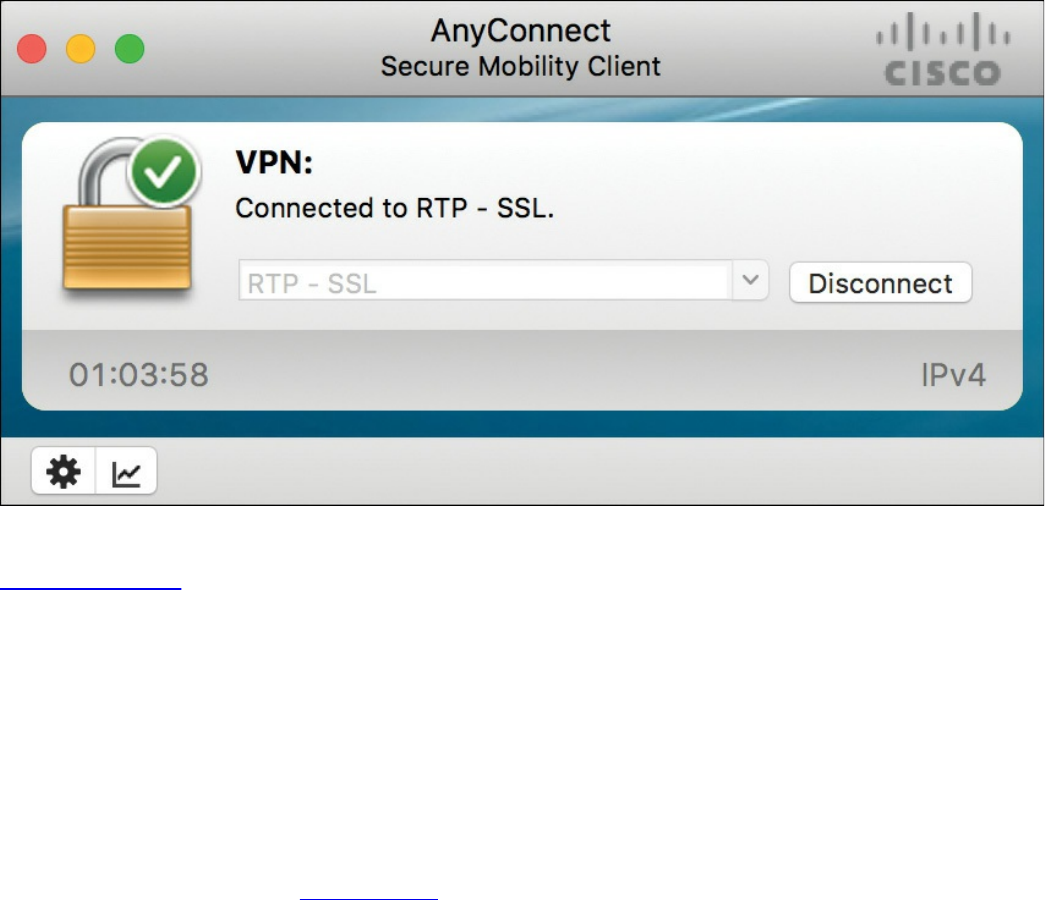

Site-to-site vs. Remote-Access VPNs

An Overview of IPsec

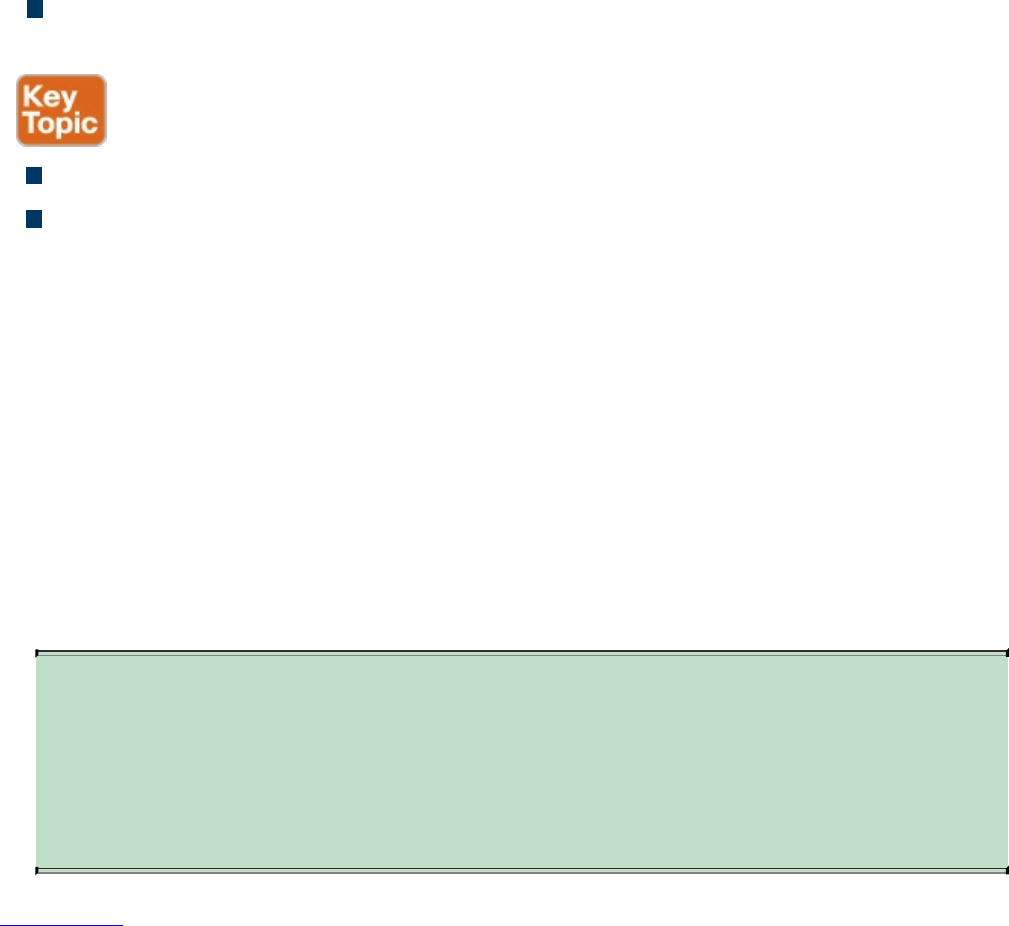

IKEv1 Phase 1

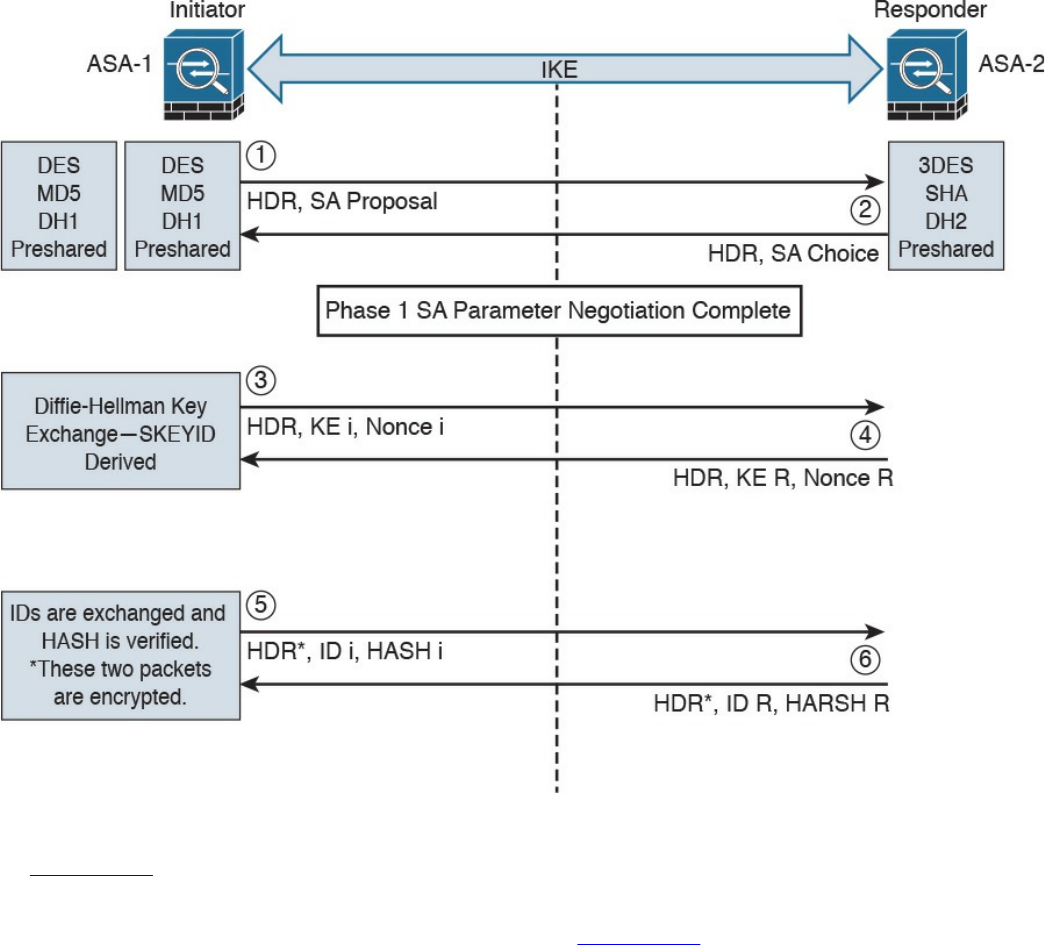

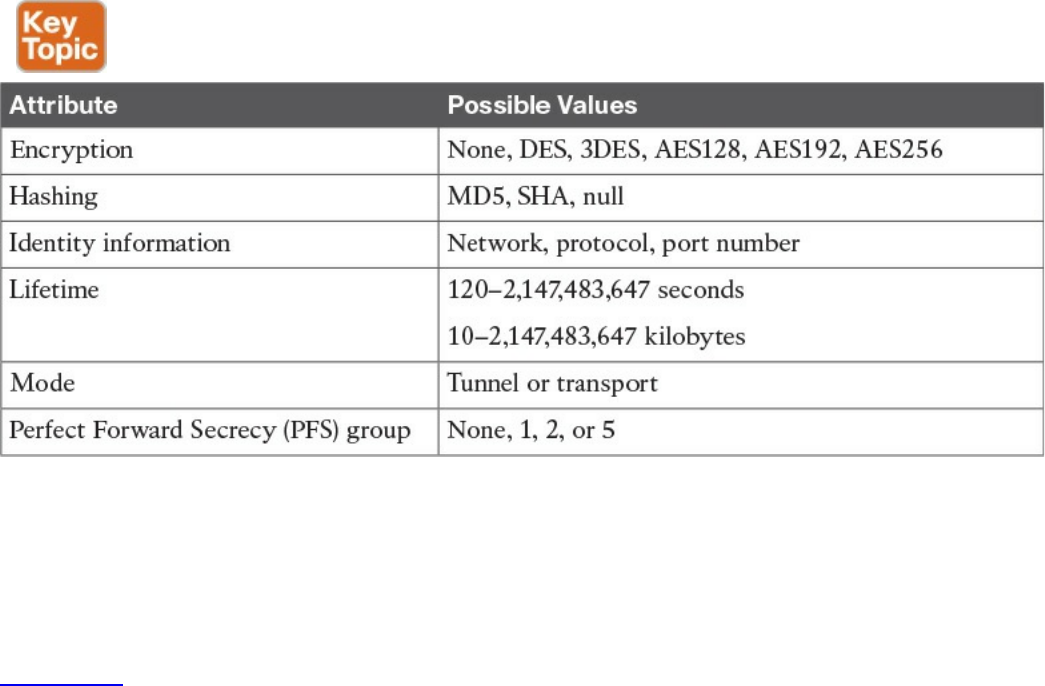

IKEv1 Phase 2

IKEv2

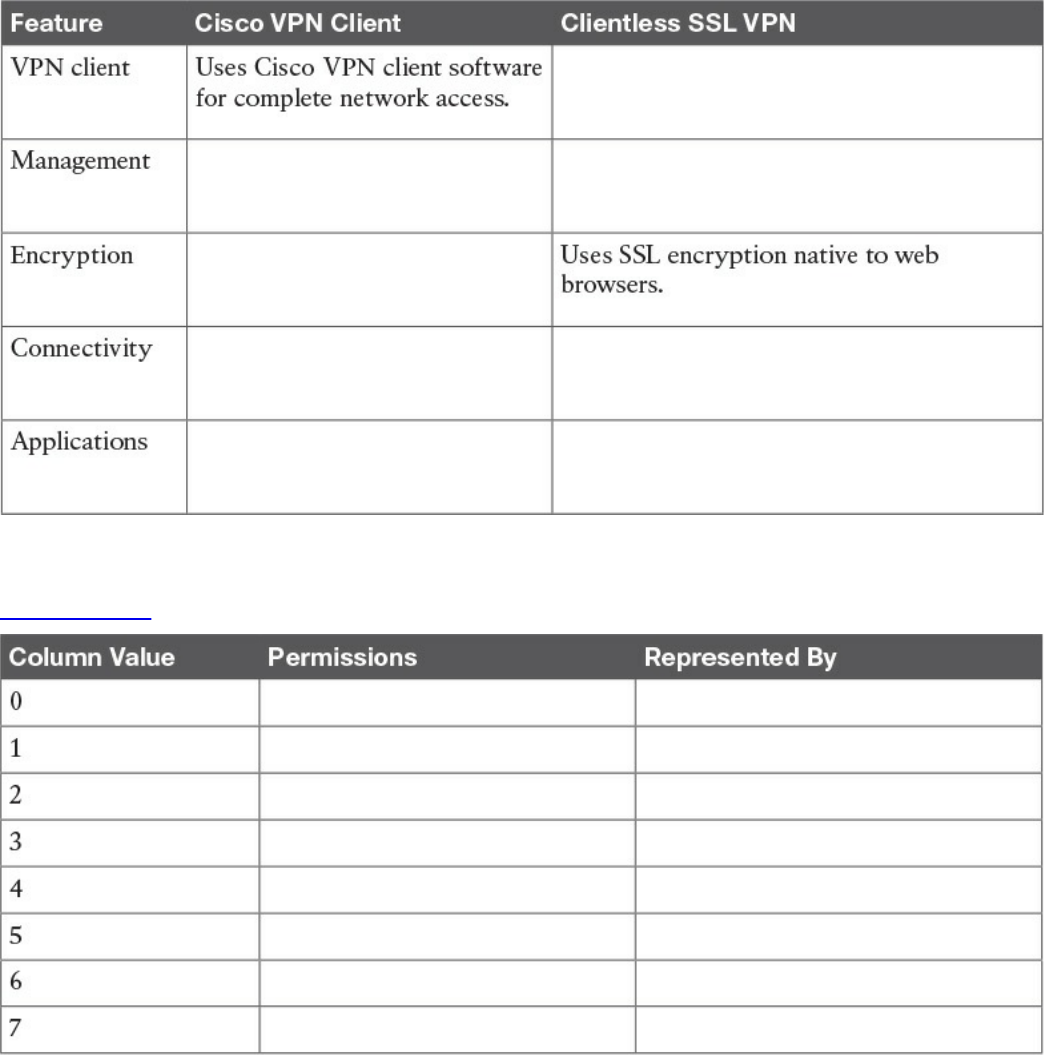

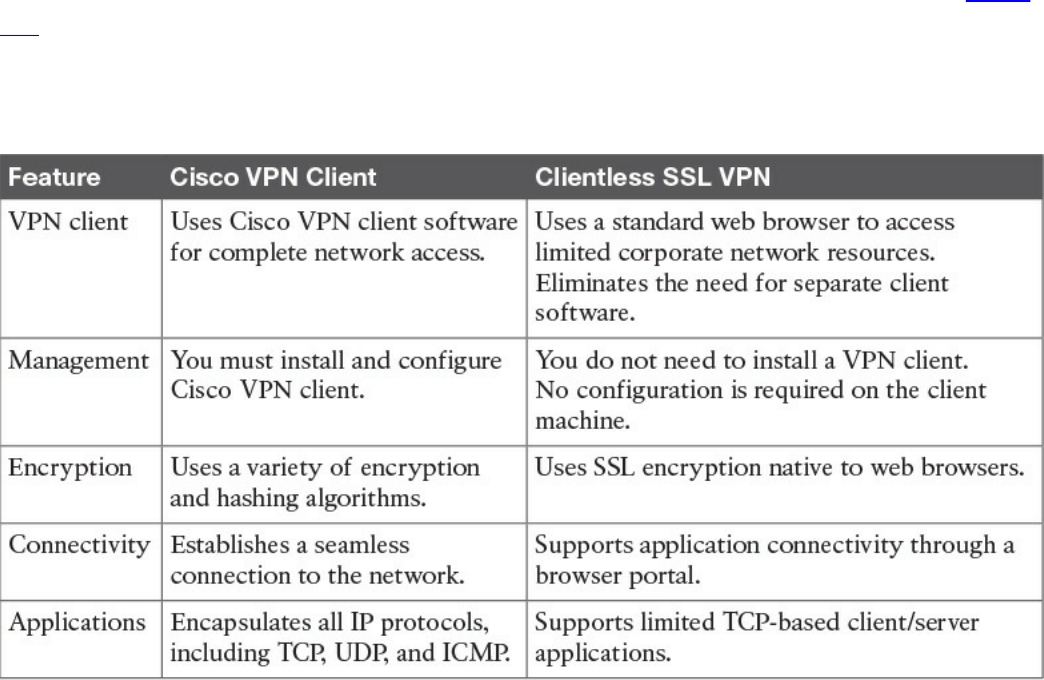

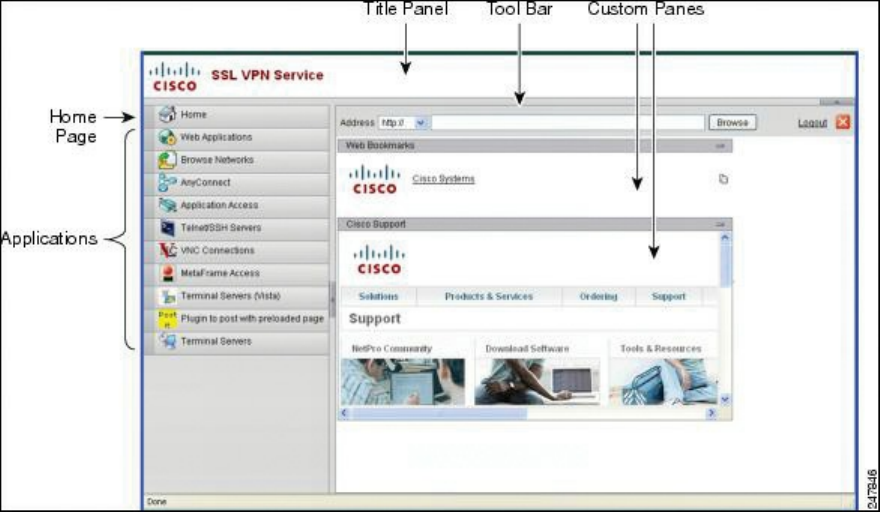

SSL VPNs

SSL VPN Design Considerations

User Connectivity

VPN Device Feature Set

www.hellodigi.ir

Infrastructure Planning

Implementation Scope

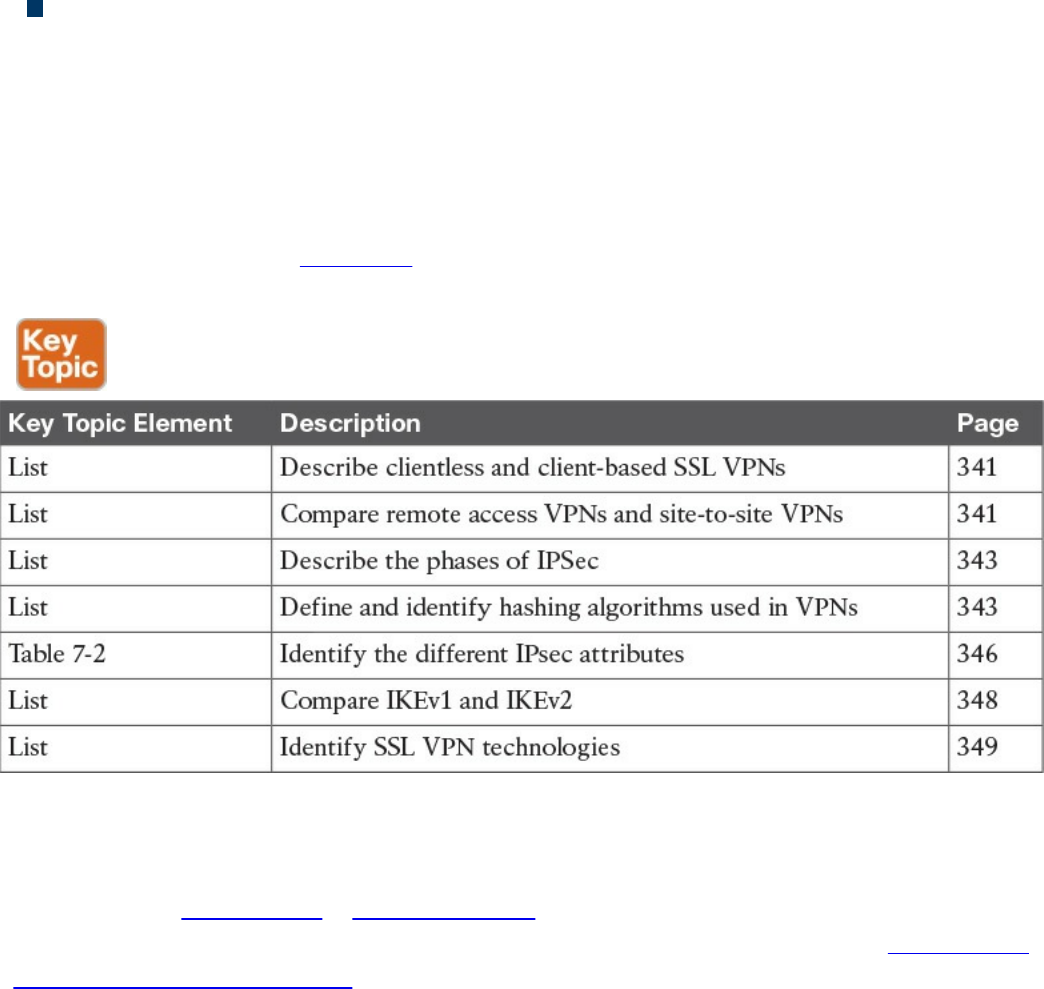

Exam Preparation Tasks

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

Part IV Host-Based Analysis

Chapter 8 Windows-Based Analysis

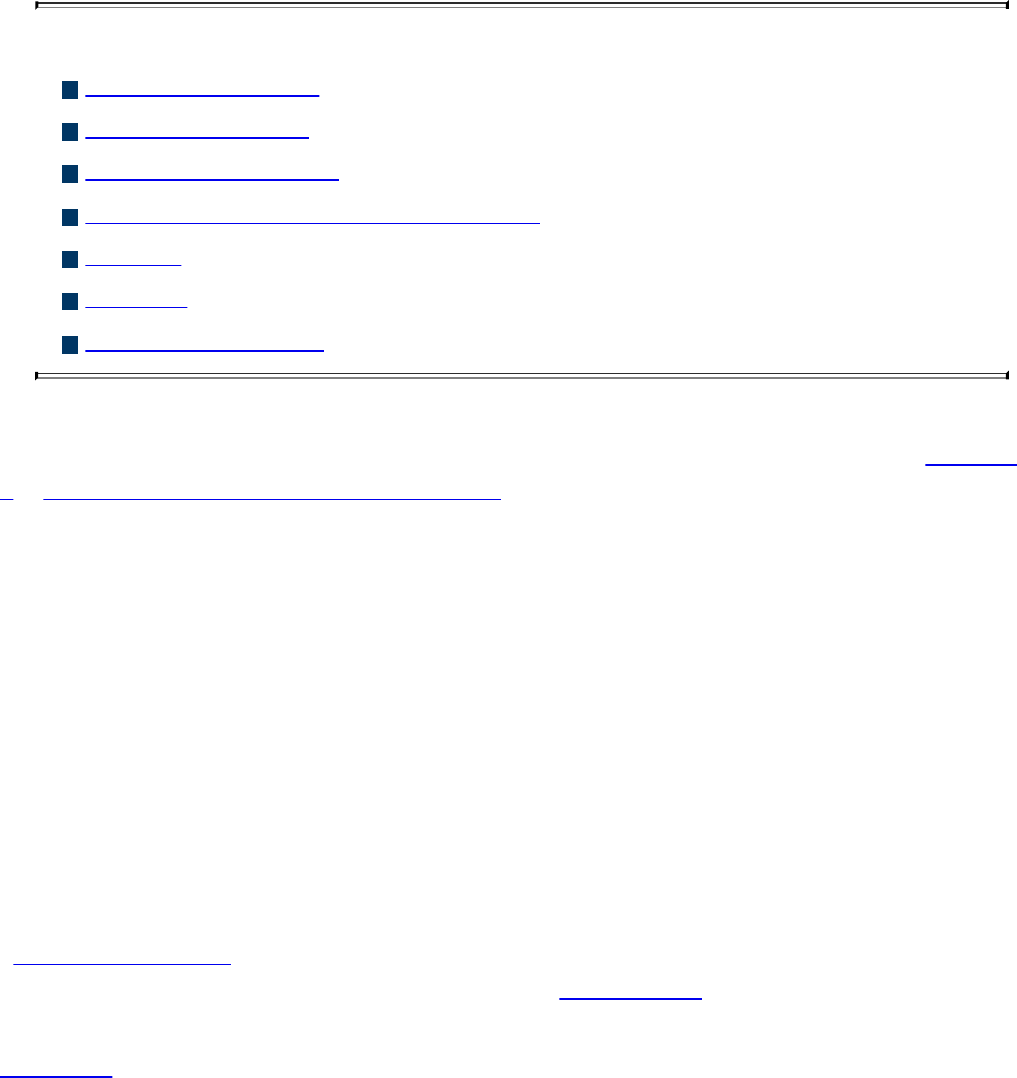

“Do I Know This Already?” Quiz

Foundation Topics

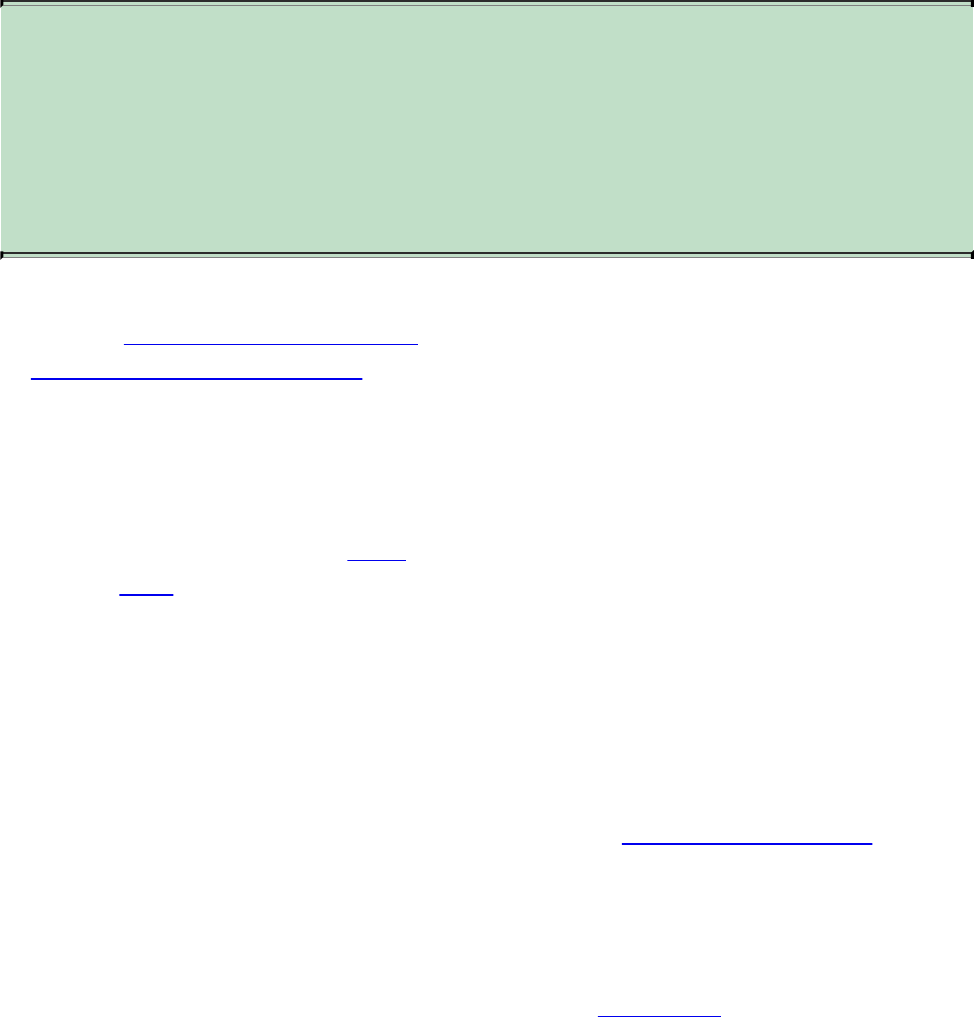

Process and Threads

Memory Allocation

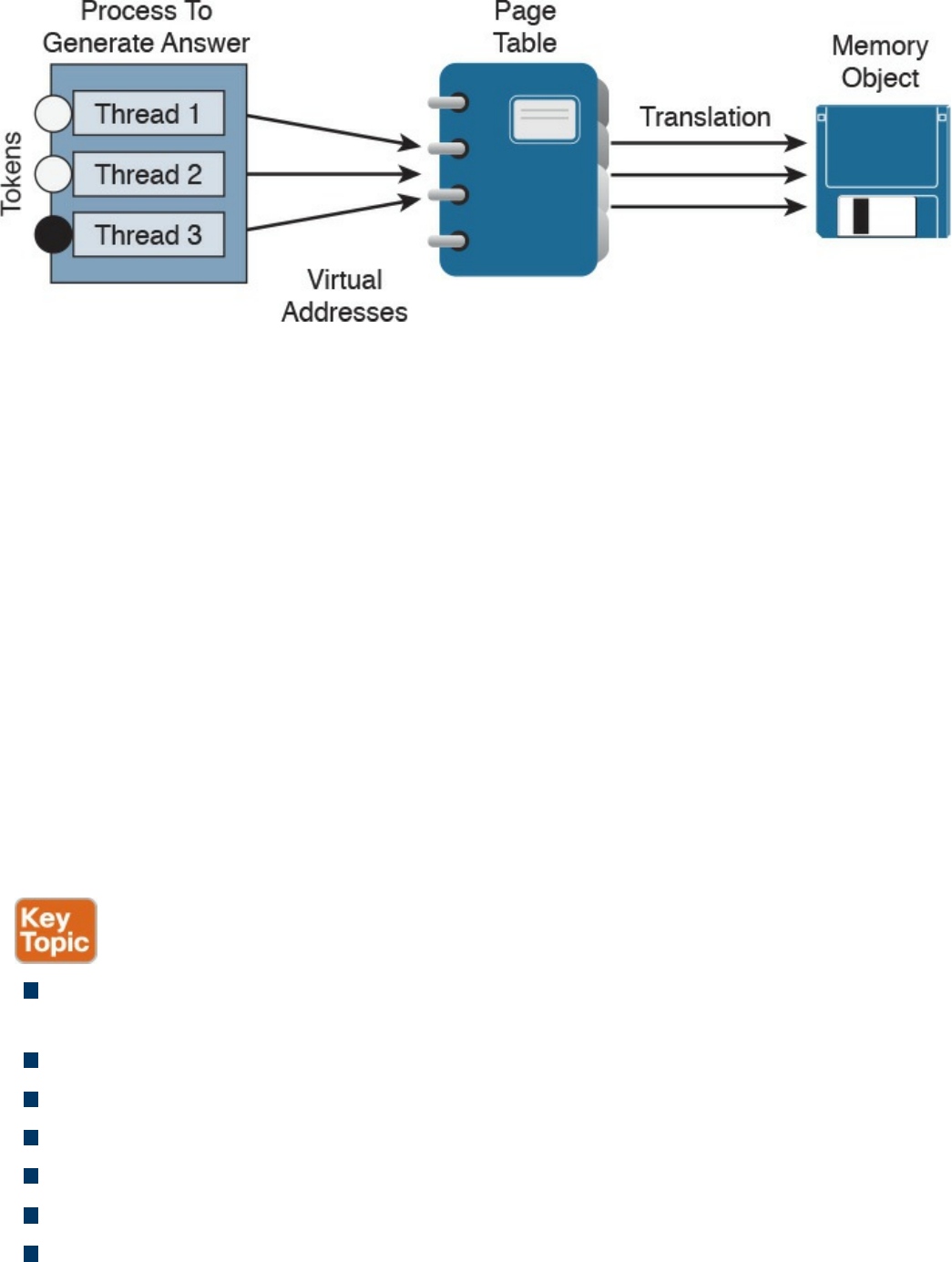

Windows Registration

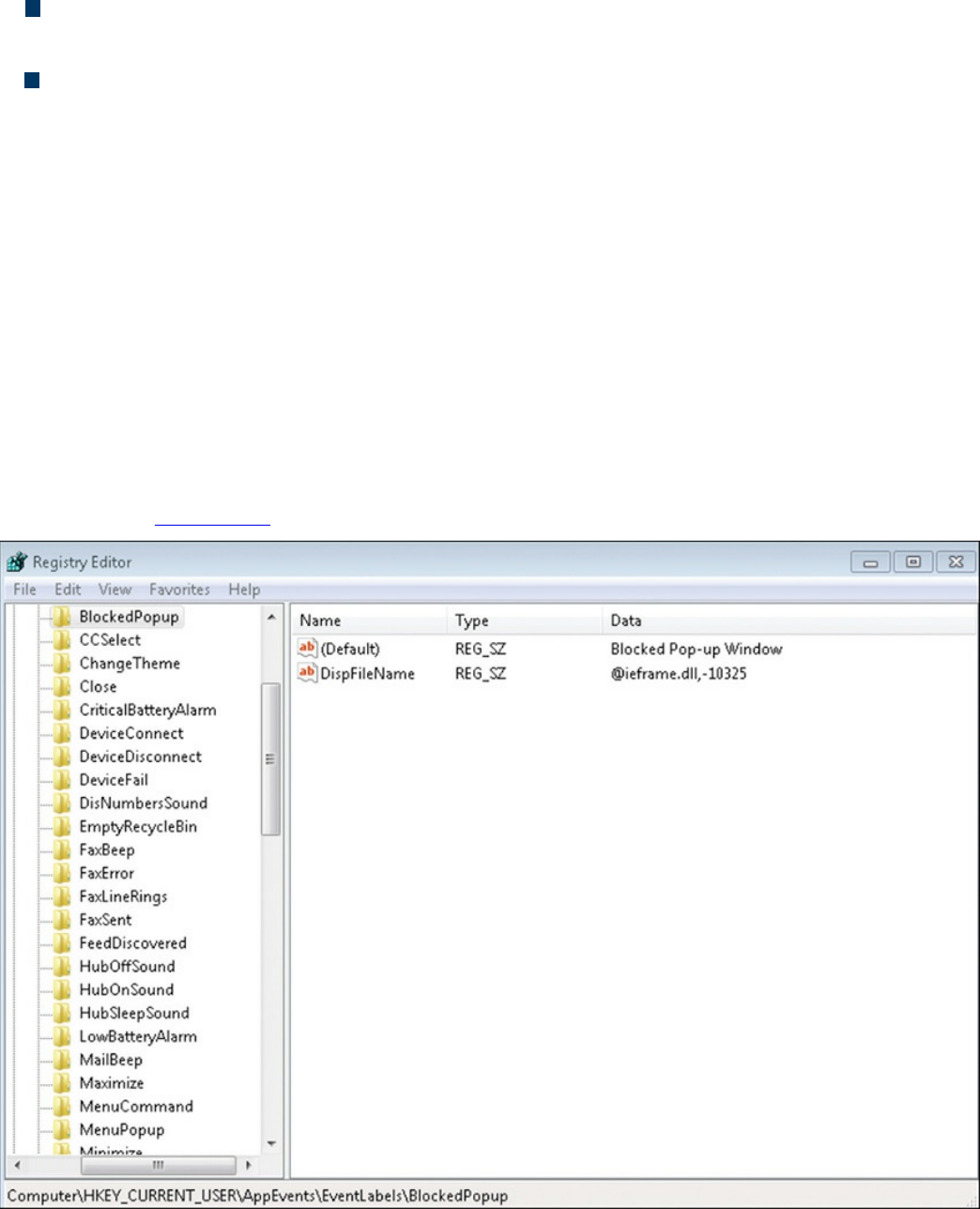

Windows Management Instrumentation

Handles

Services

Windows Event Logs

Exam Preparation Tasks

Review All Key Topics

Define Key Terms

Q&A

References and Further Reading

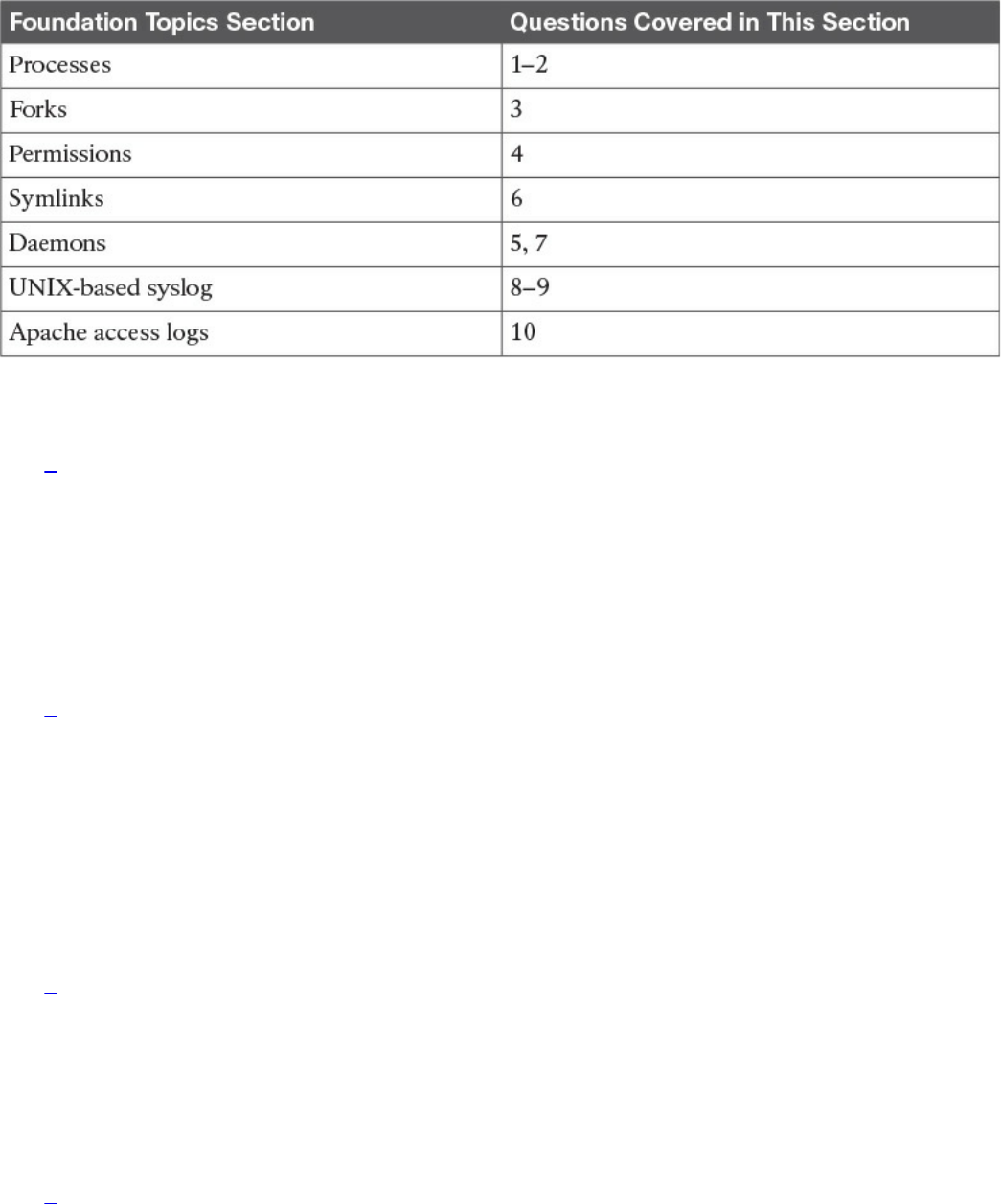

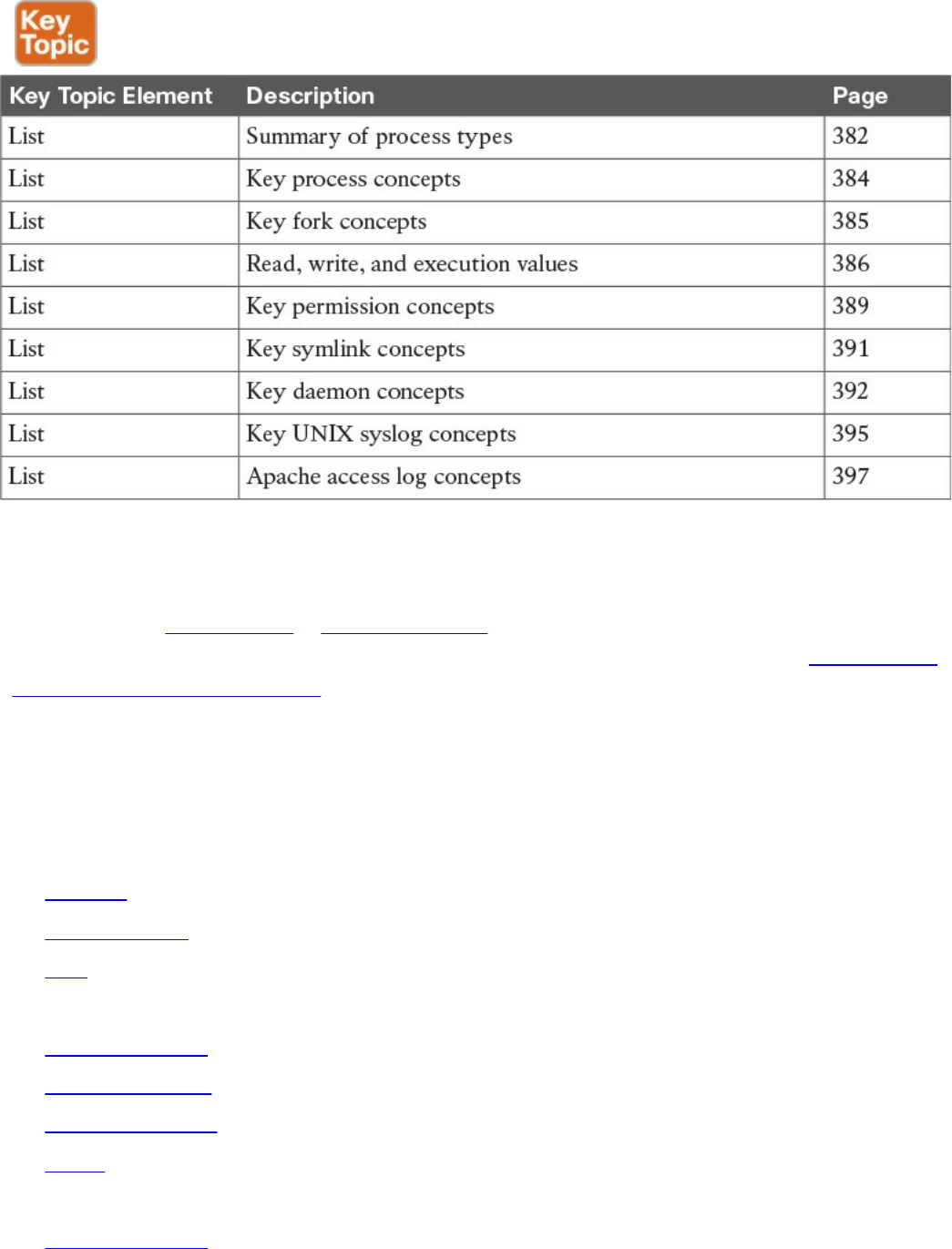

Chapter 9 Linux- and Mac OS X–Based Analysis

“Do I Know This Already?” Quiz

Foundation Topics

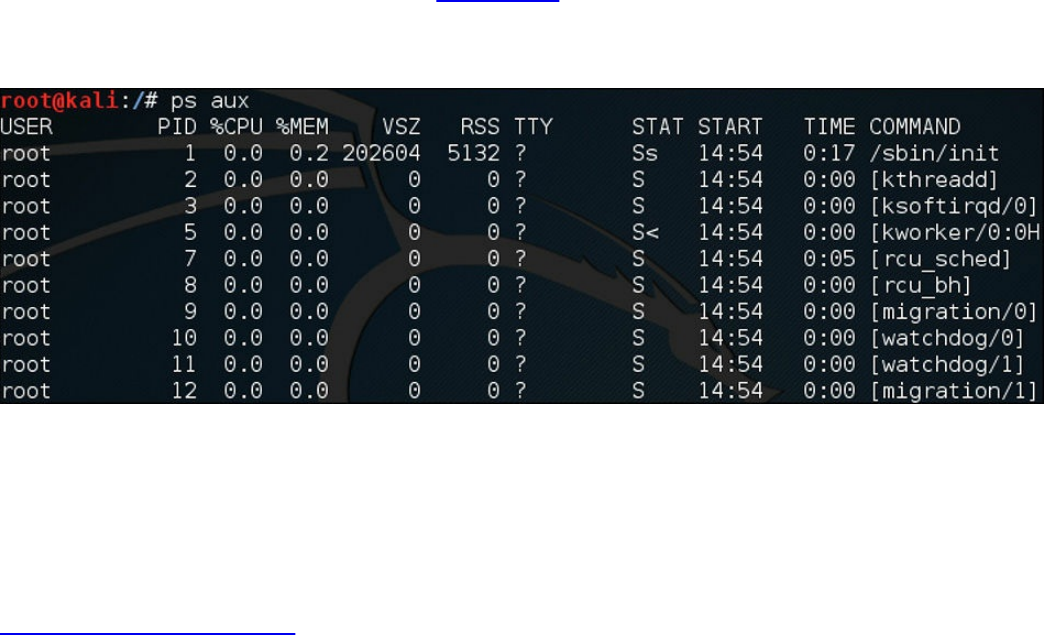

Processes

Forks

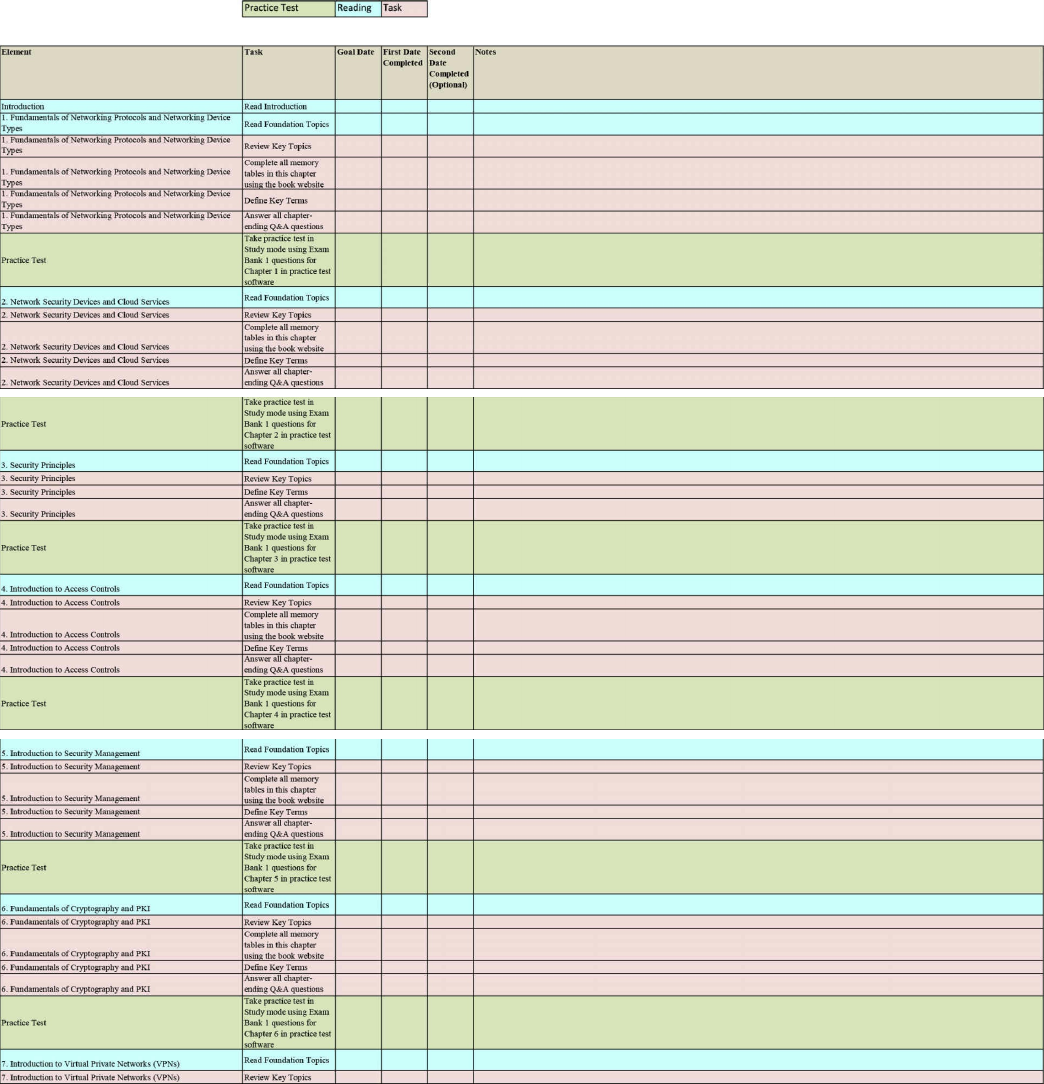

Permissions

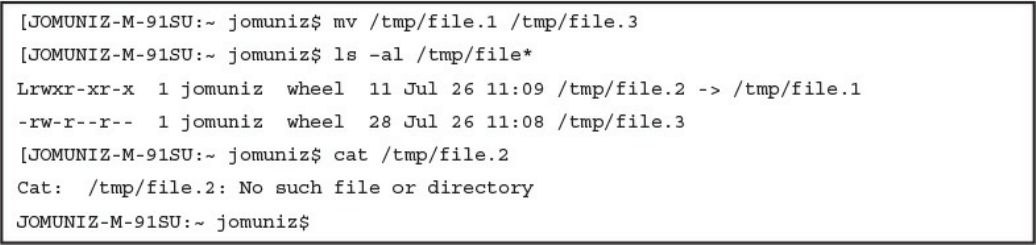

Symlinks

Daemons

www.hellodigi.ir

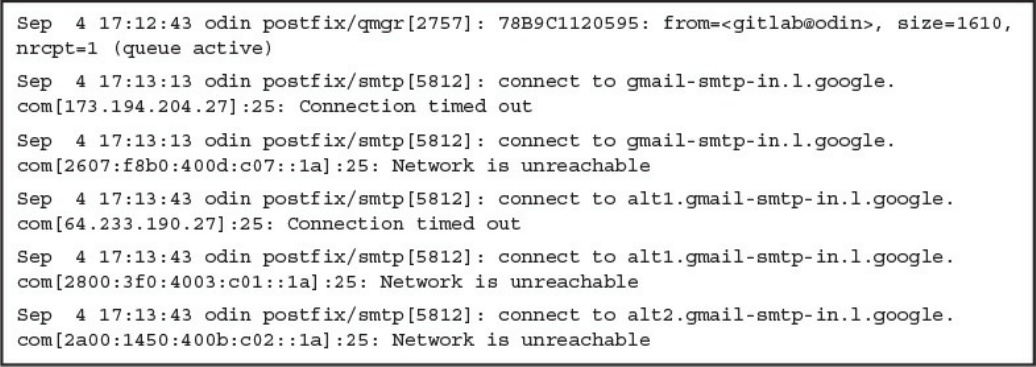

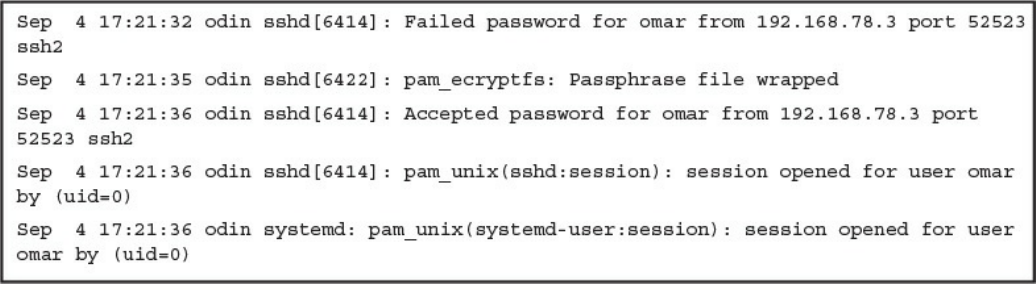

UNIX-Based Syslog

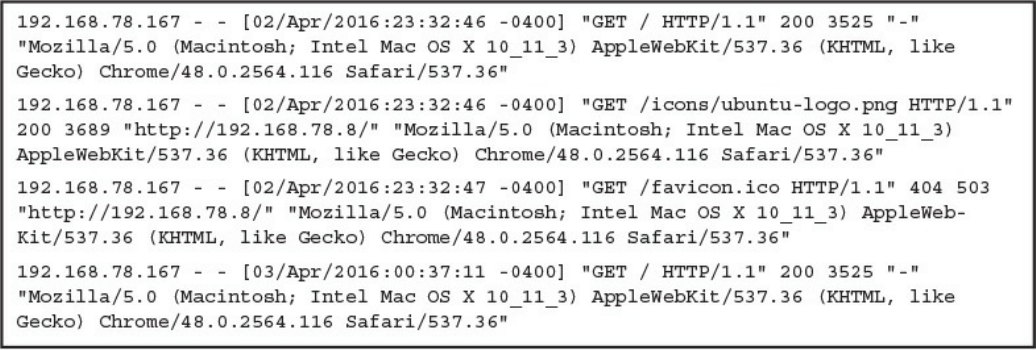

Apache Access Logs

Exam Preparation Tasks

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

References and Further Reading

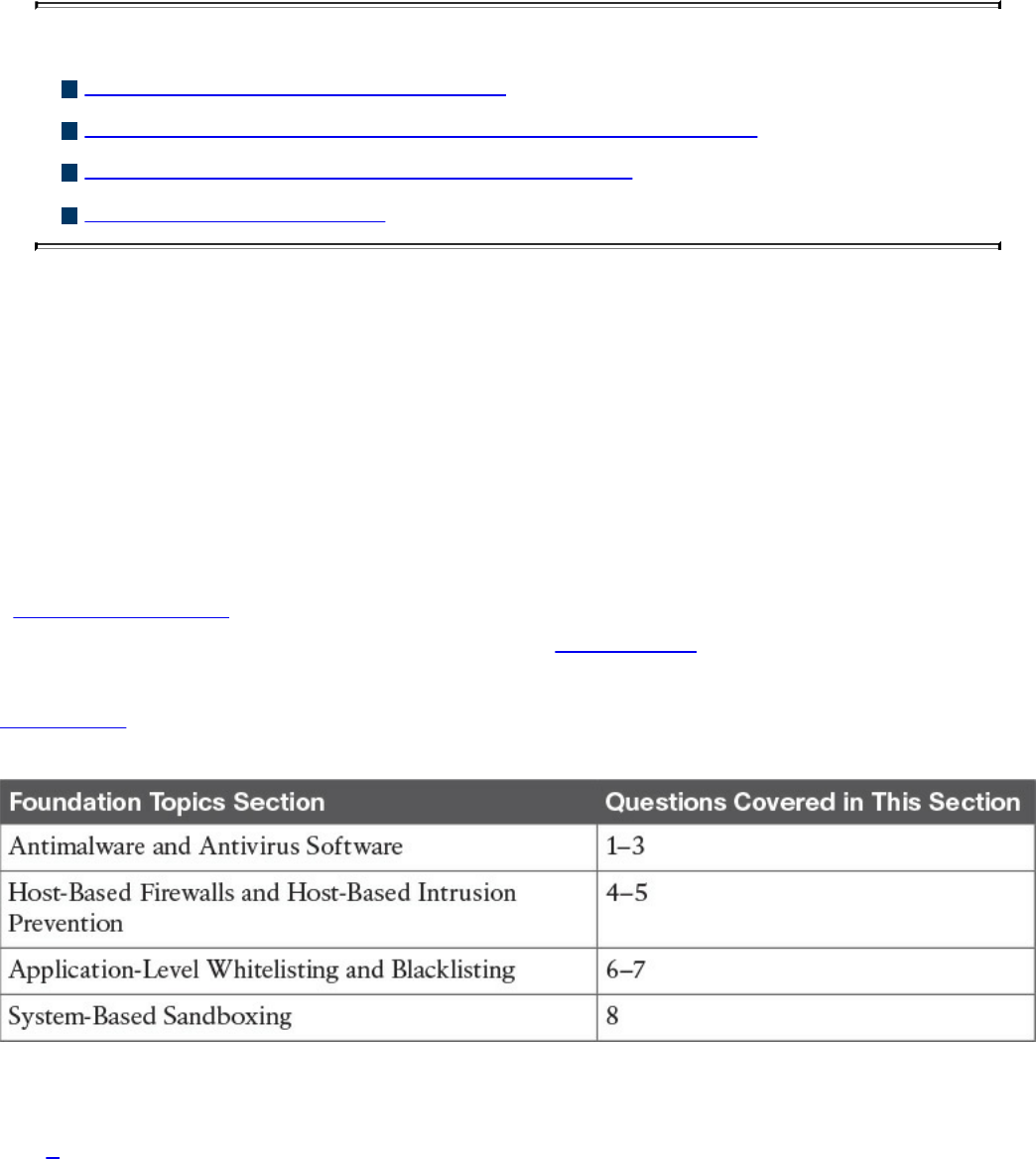

Chapter 10 Endpoint Security Technologies

“Do I Know This Already?” Quiz

Foundation Topics

Antimalware and Antivirus Software

Host-Based Firewalls and Host-Based Intrusion Prevention

Application-Level Whitelisting and Blacklisting

System-Based Sandboxing

Exam Preparation Tasks

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

Part V Security Monitoring and Attack Methods

Chapter 11 Network and Host Telemetry

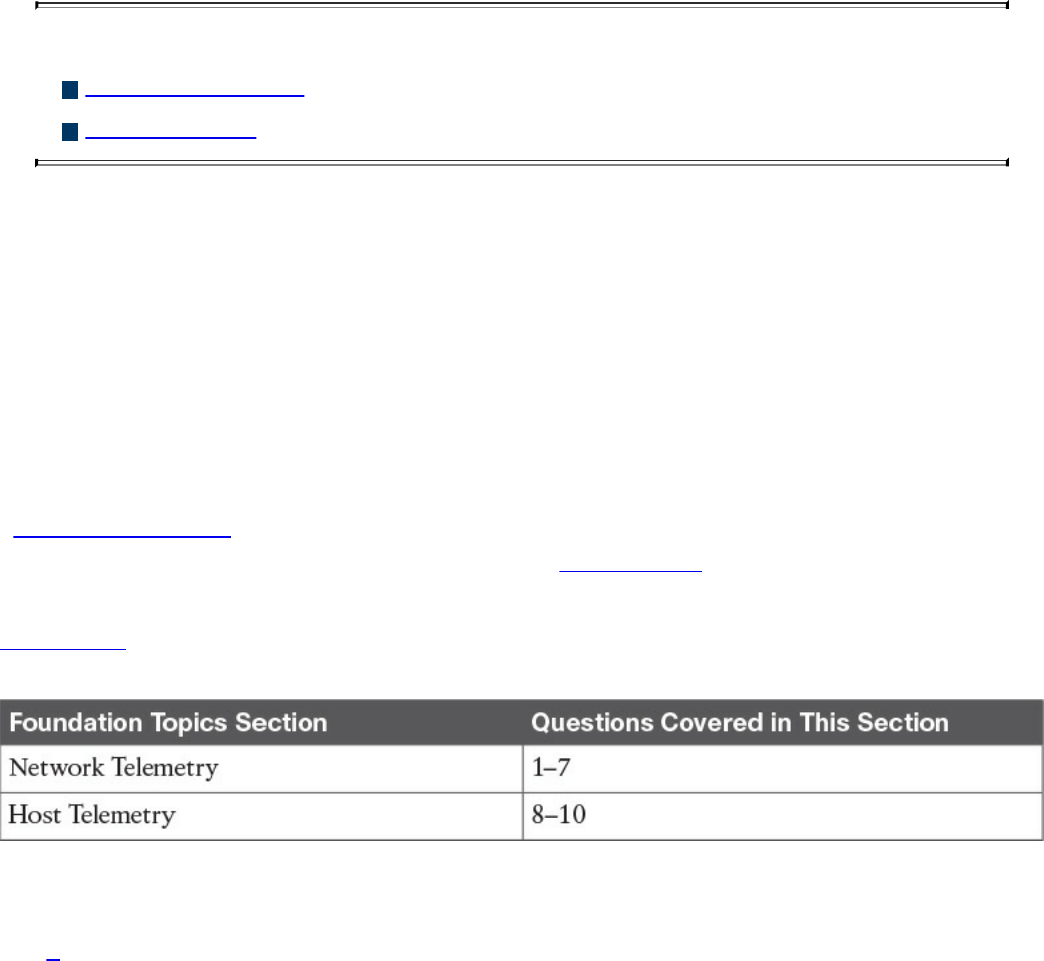

“Do I Know This Already?” Quiz

Foundation Topics

Network Telemetry

Network Infrastructure Logs

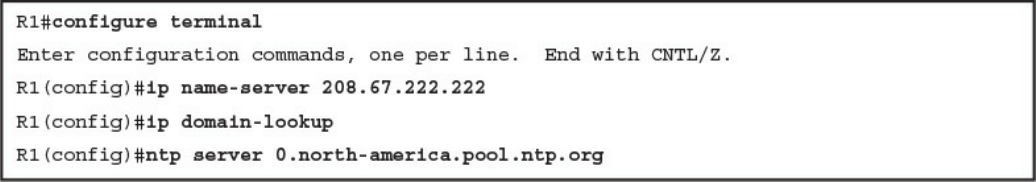

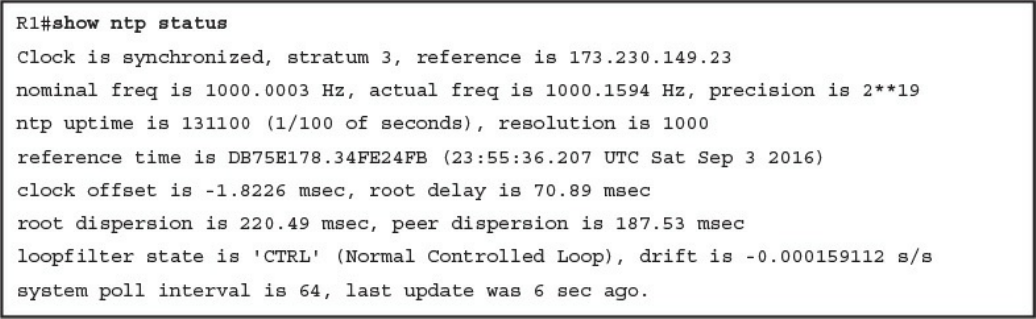

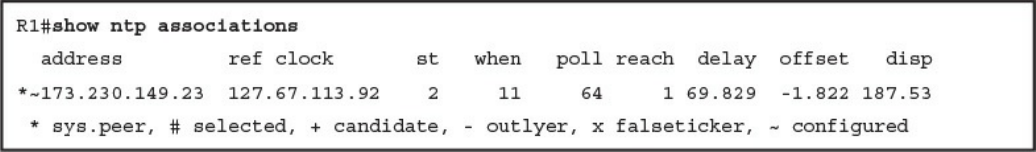

Network Time Protocol and Why It Is Important

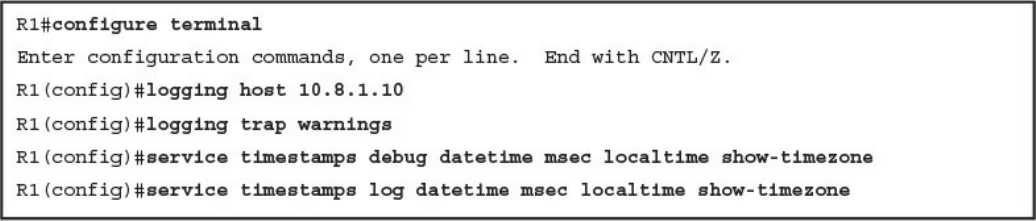

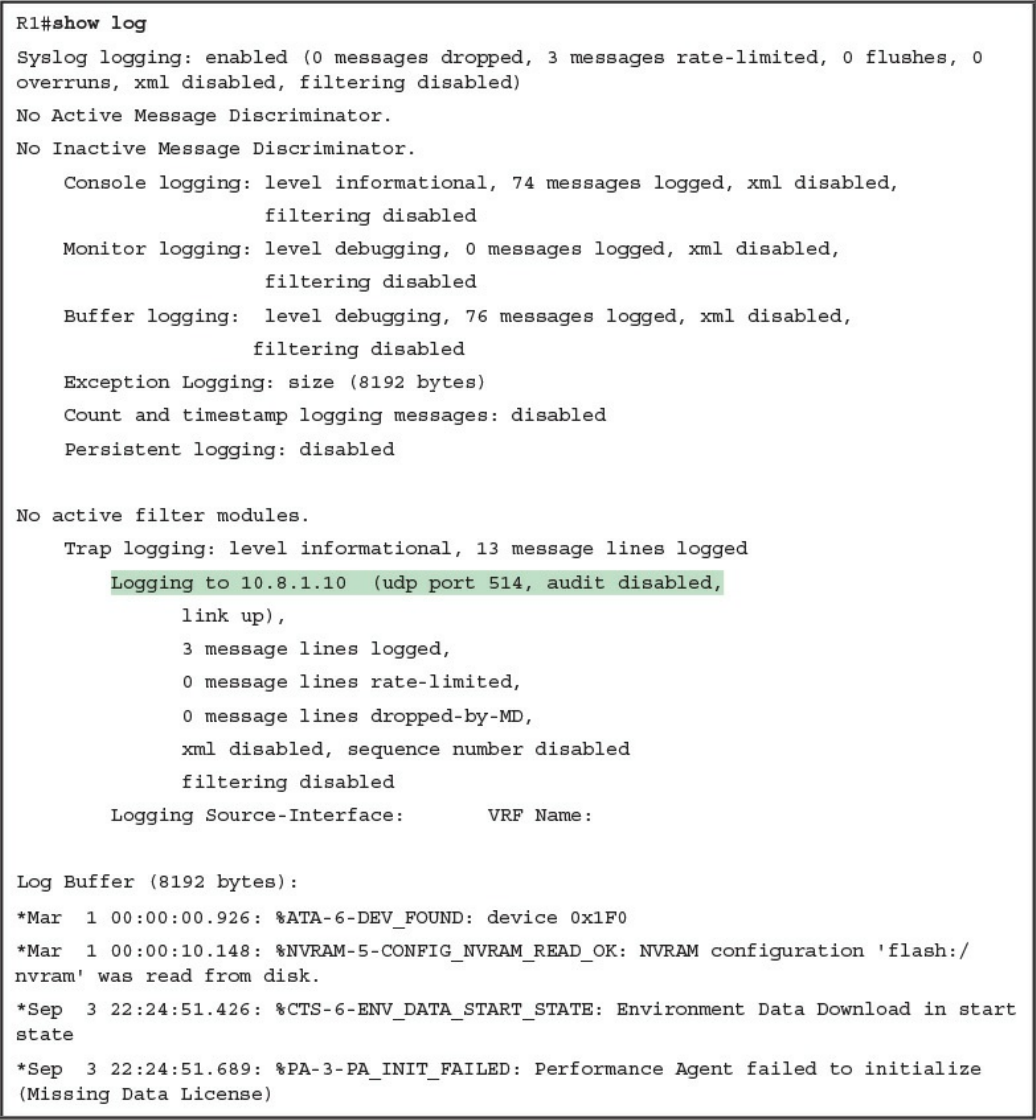

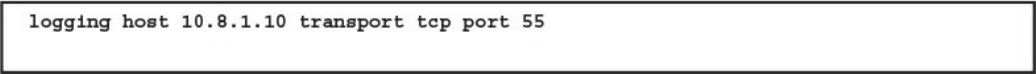

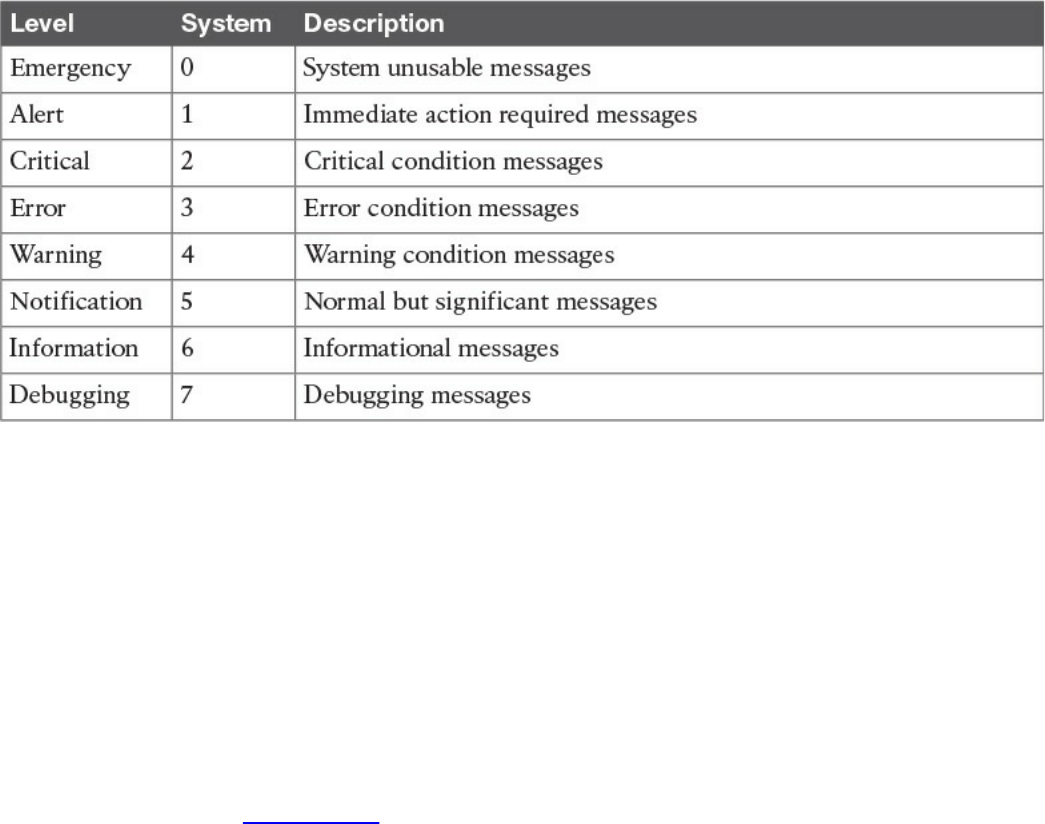

Configuring Syslog in a Cisco Router or Switch

Traditional Firewall Logs

Console Logging

Terminal Logging

www.hellodigi.ir

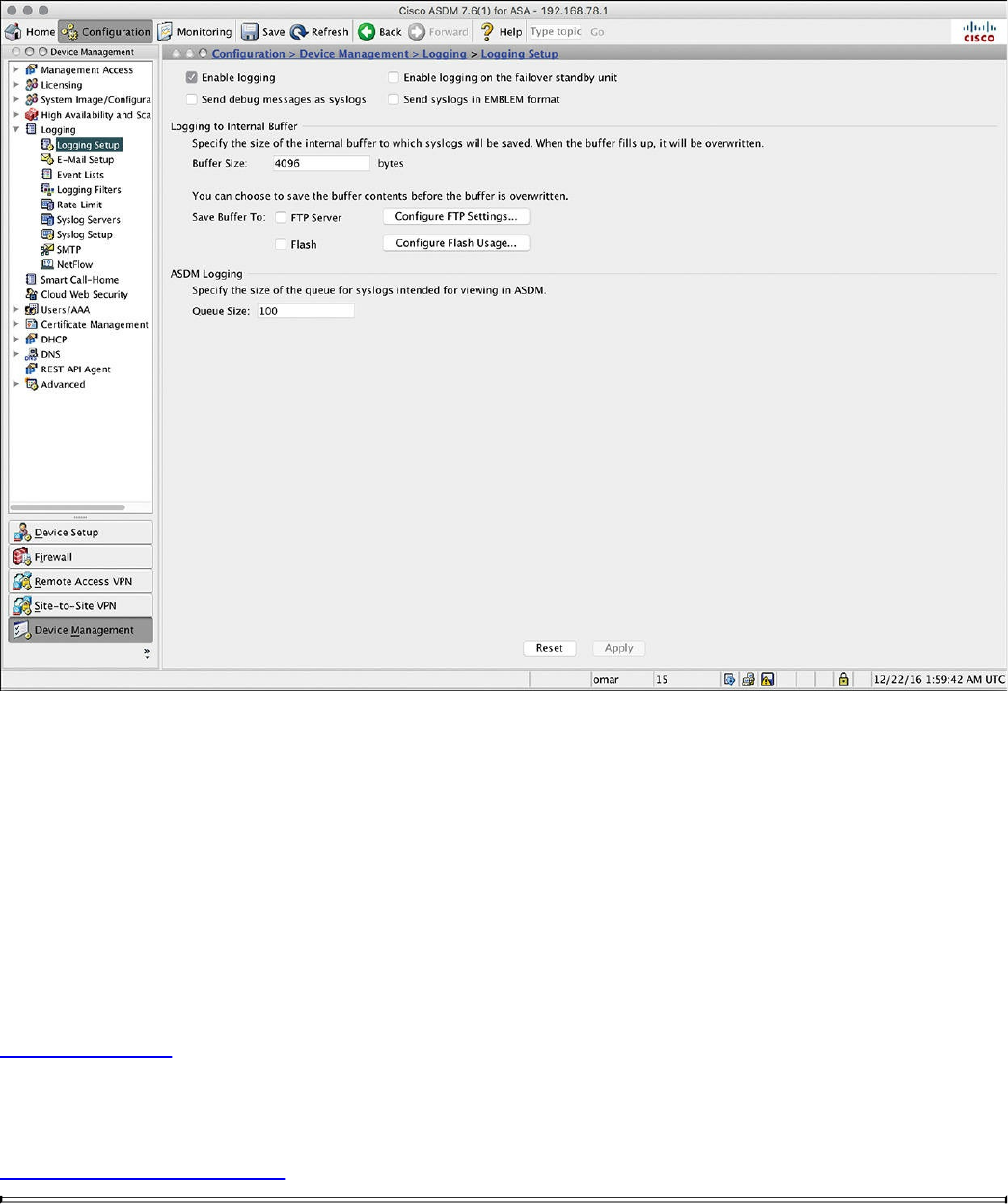

ASDM Logging

Email Logging

Syslog Server Logging

SNMP Trap Logging

Buffered Logging

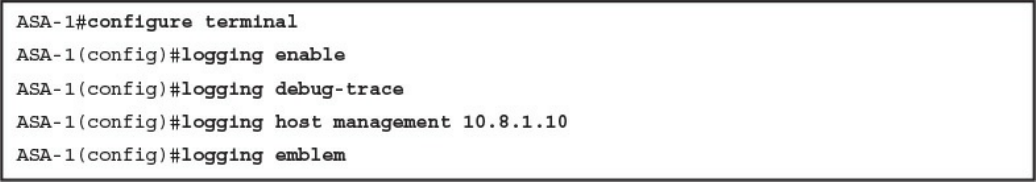

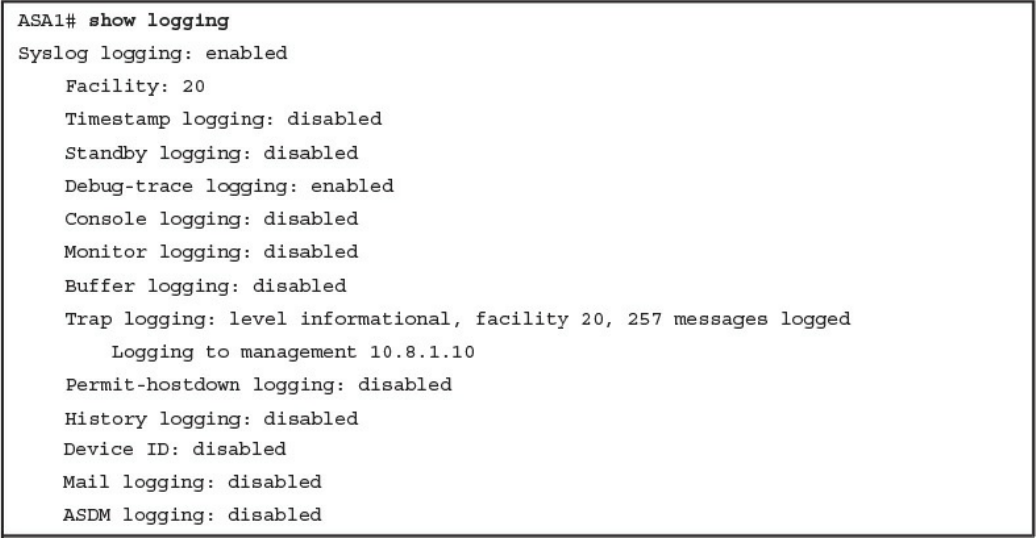

Configuring Logging on the Cisco ASA

Syslog in Large Scale Environments

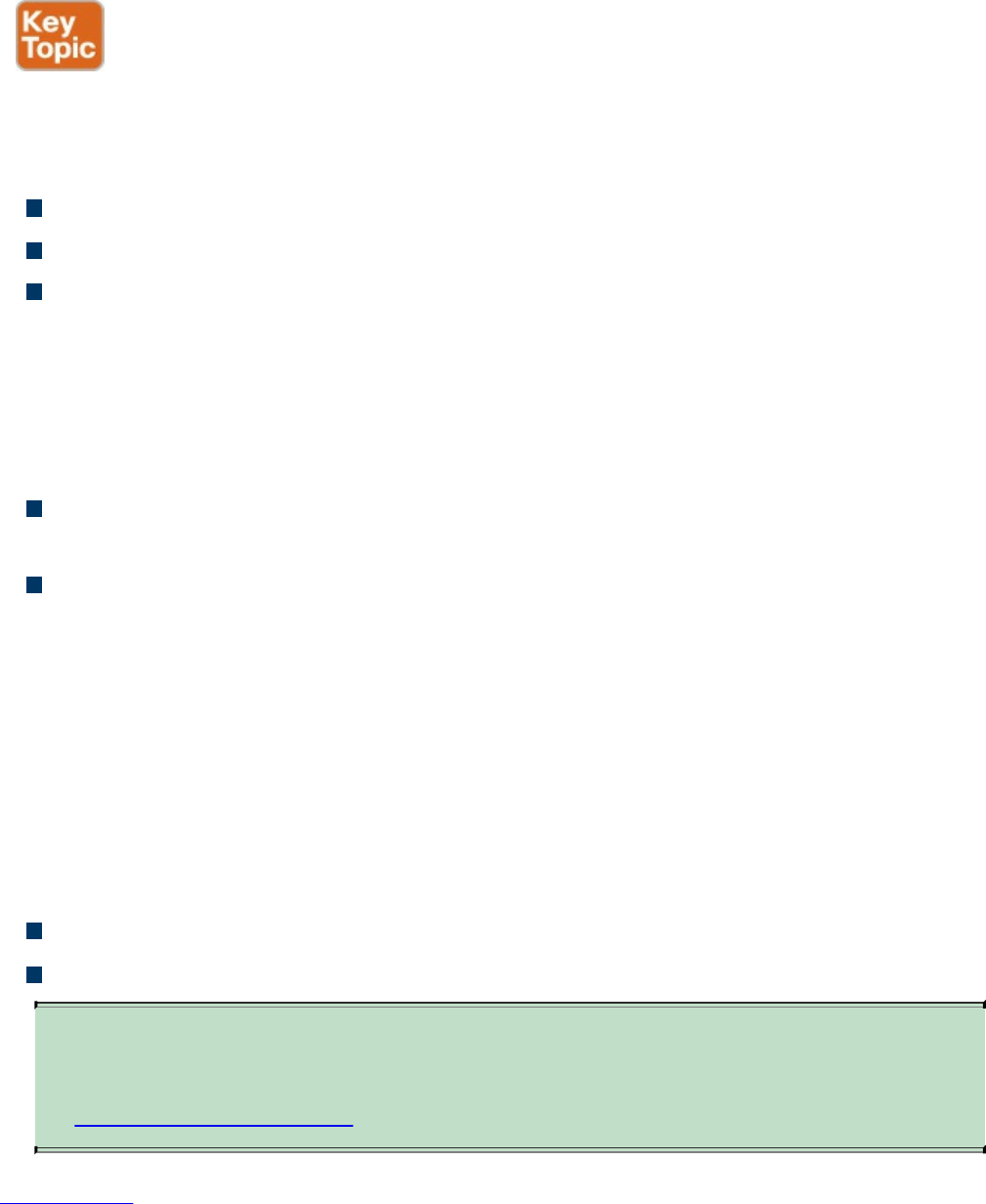

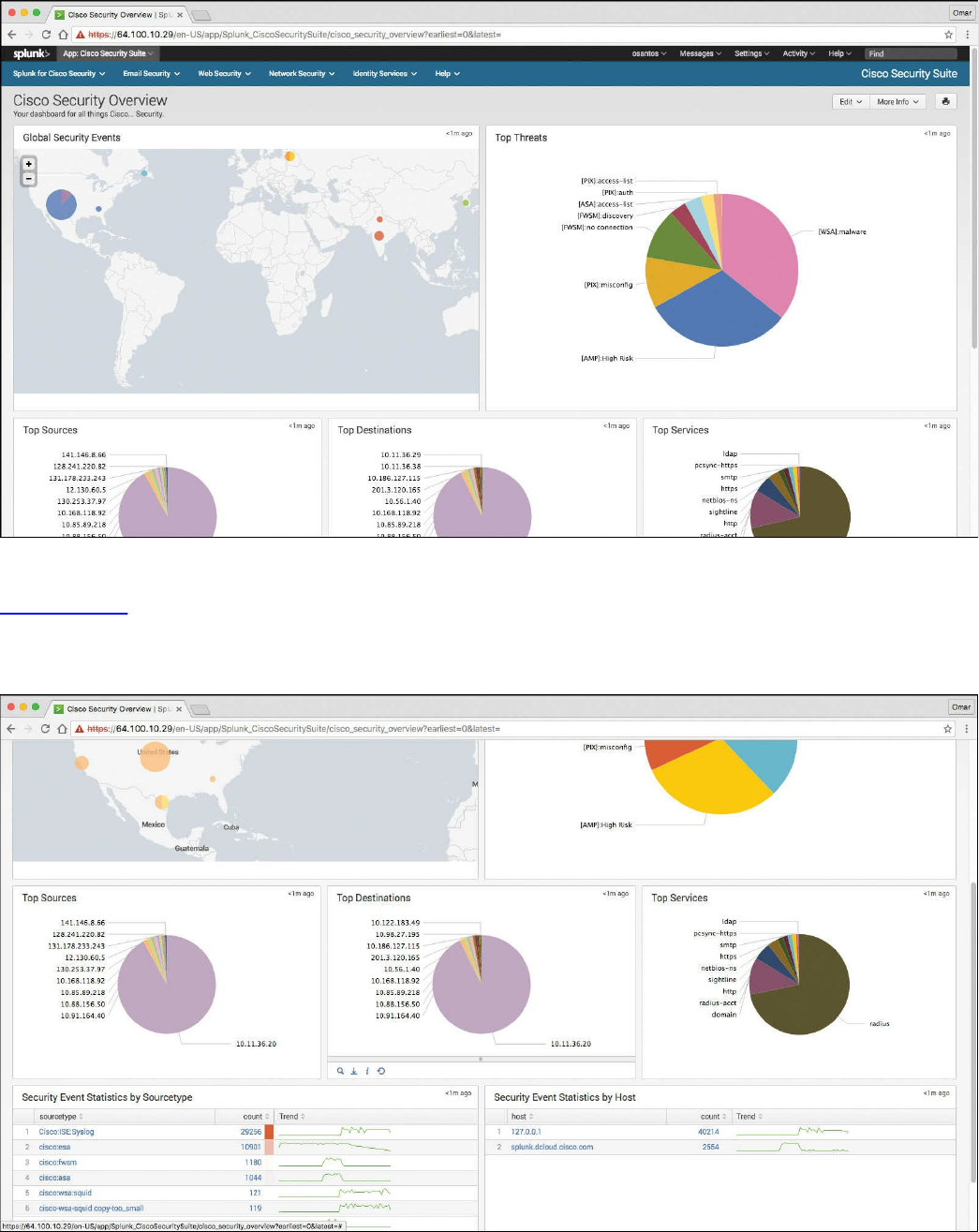

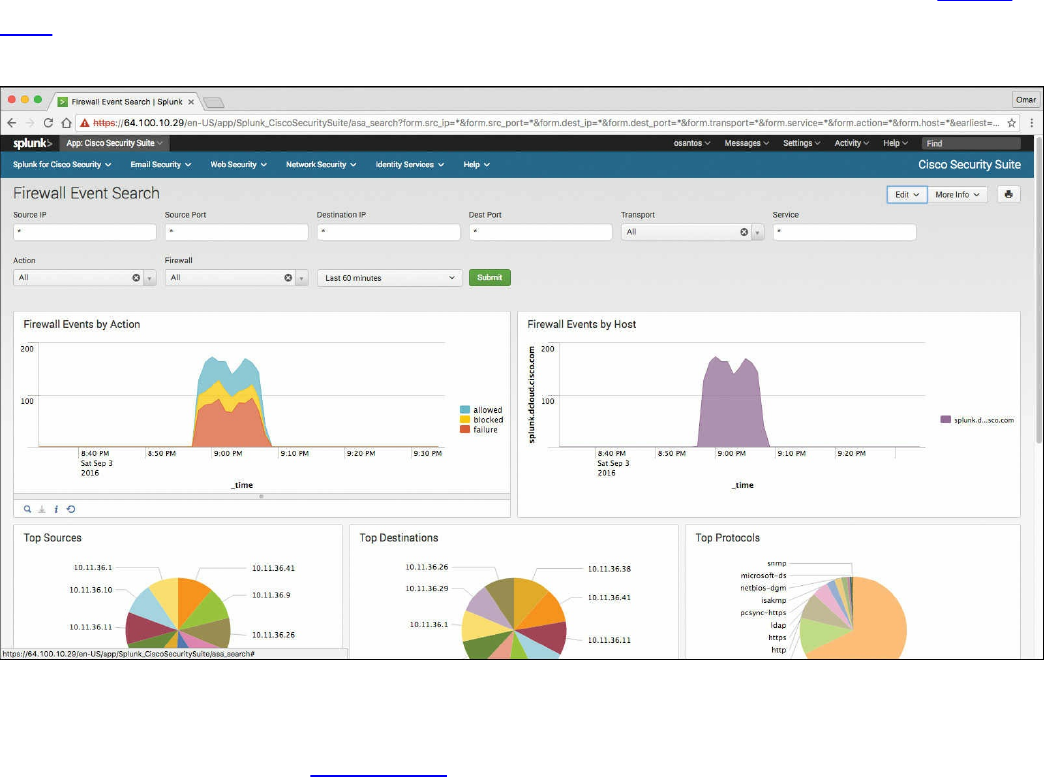

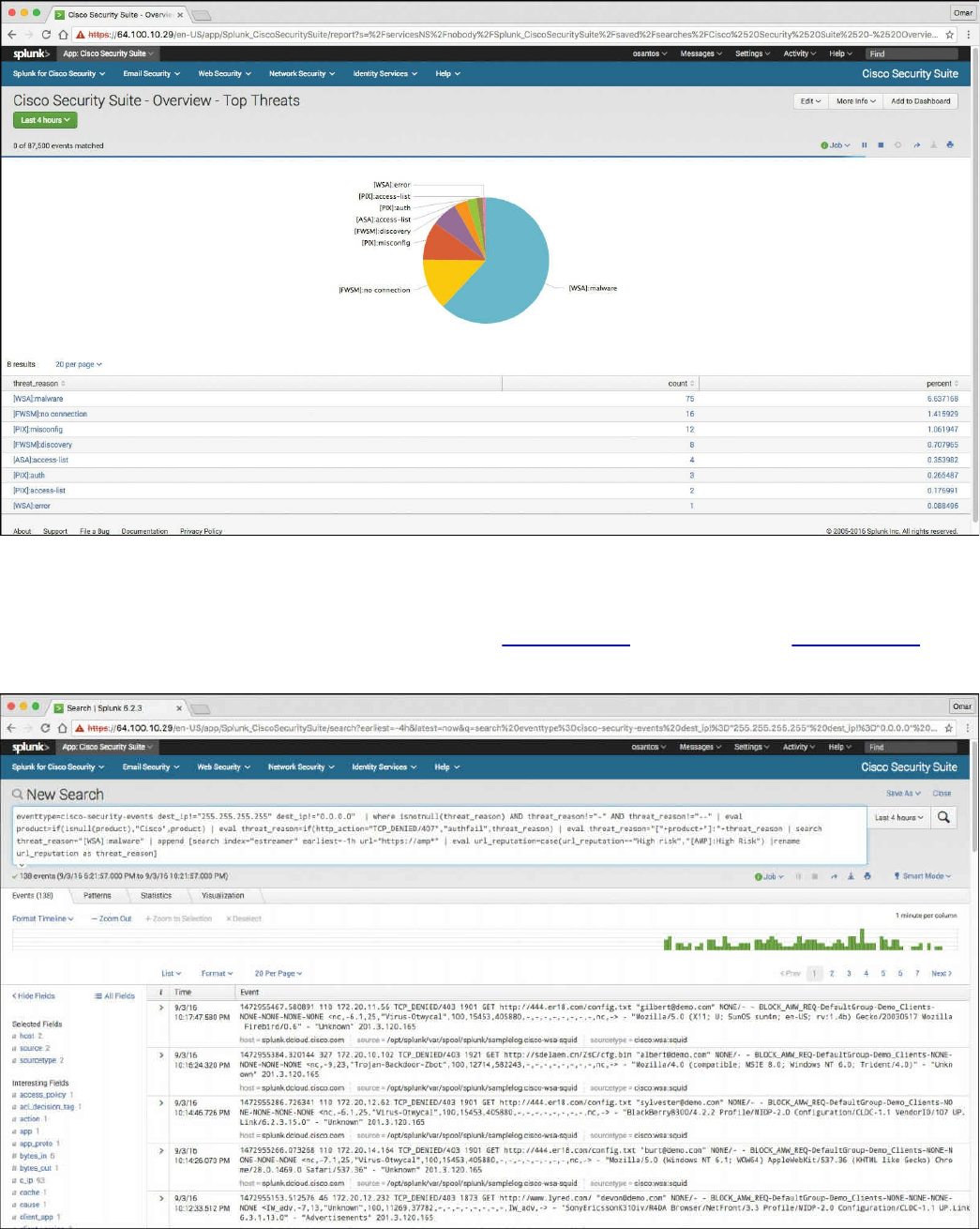

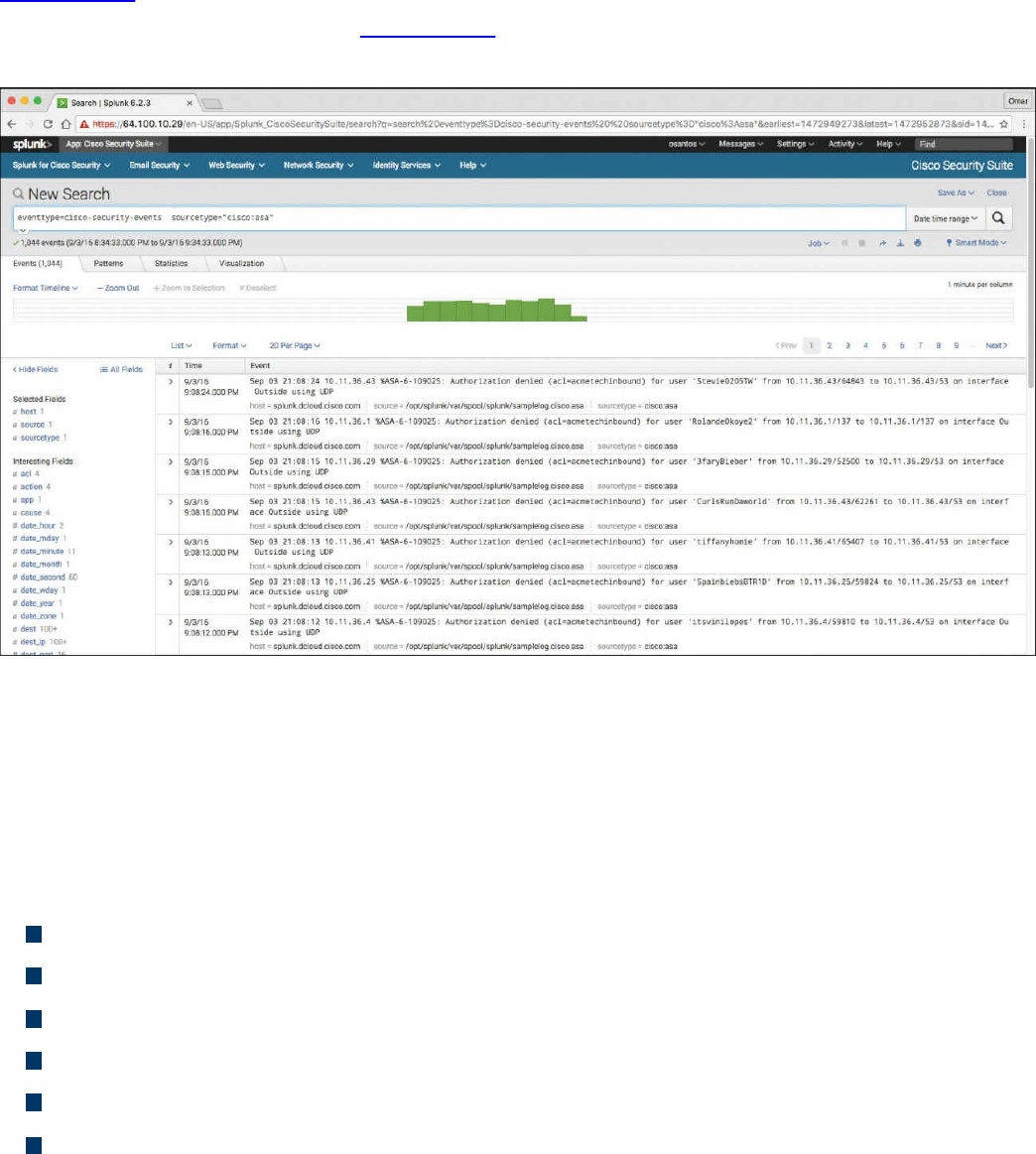

Splunk

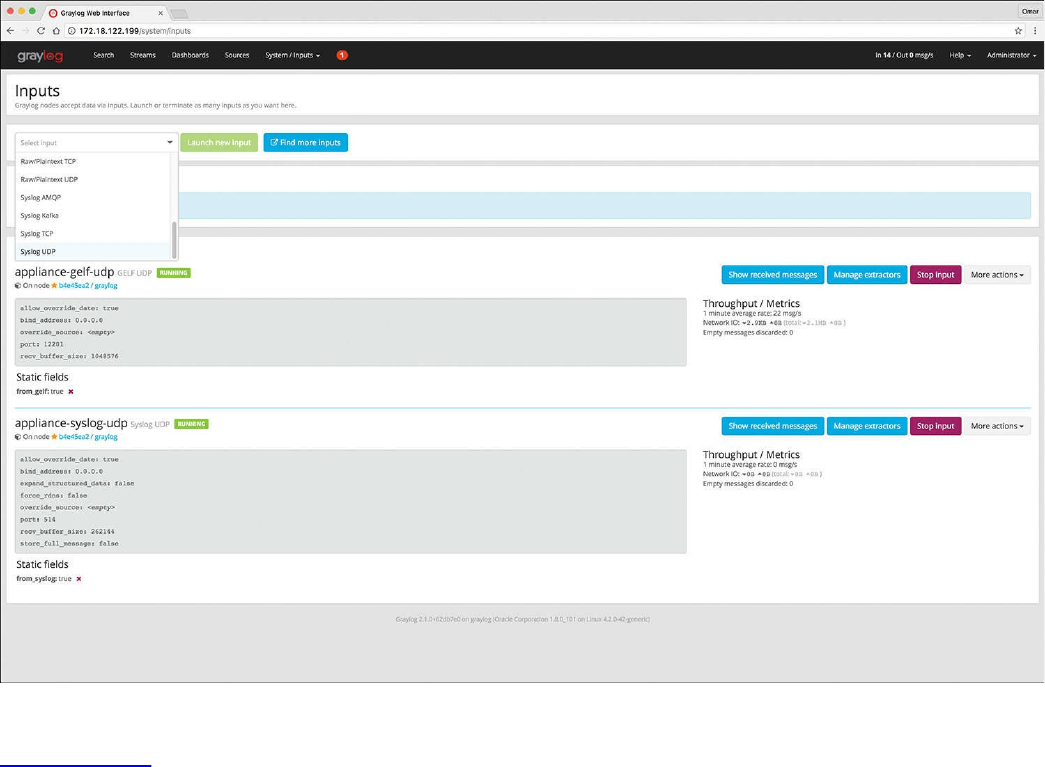

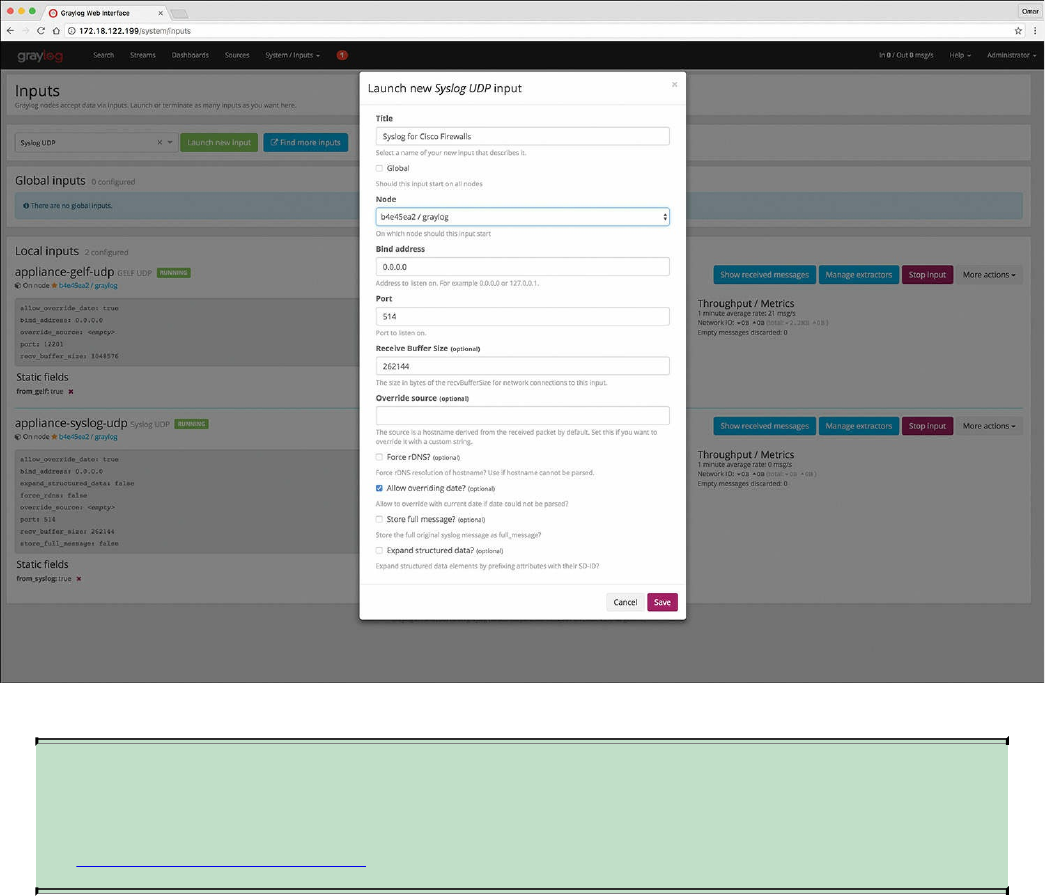

Graylog

Elasticsearch, Logstash, and Kibana (ELK) Stack

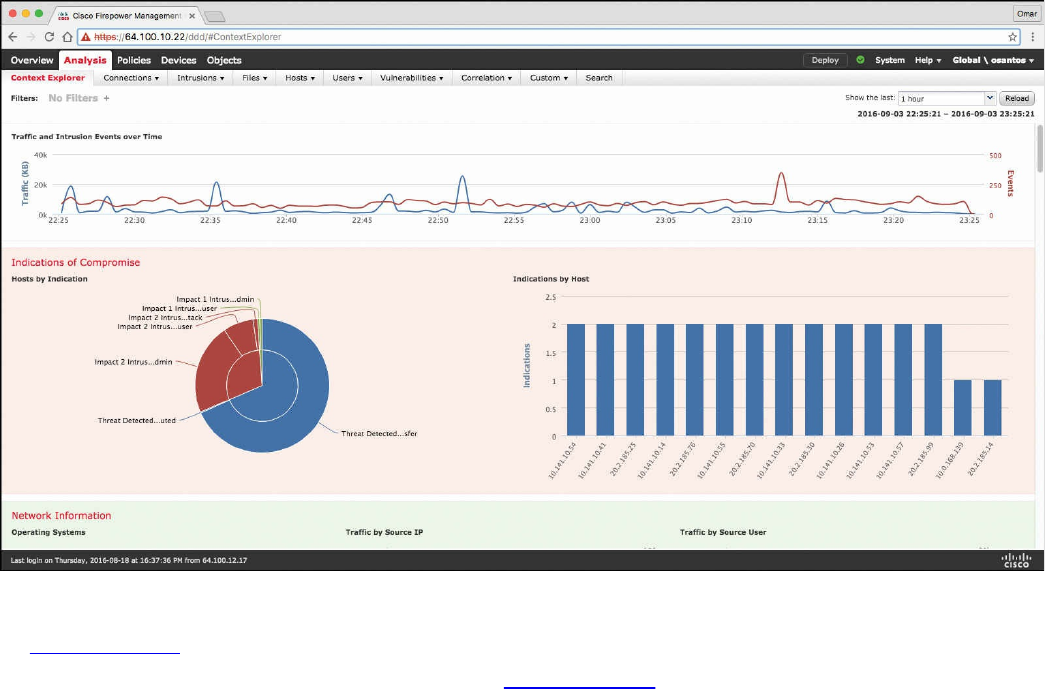

Next-Generation Firewall and Next-Generation IPS Logs

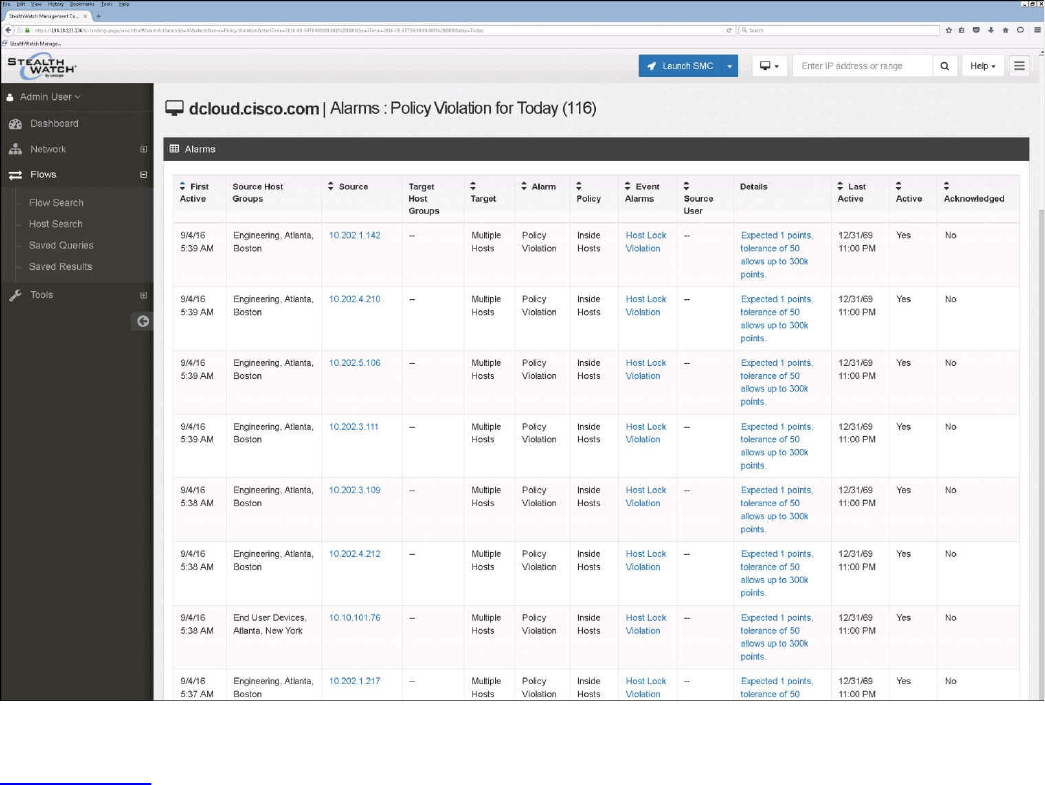

NetFlow Analysis

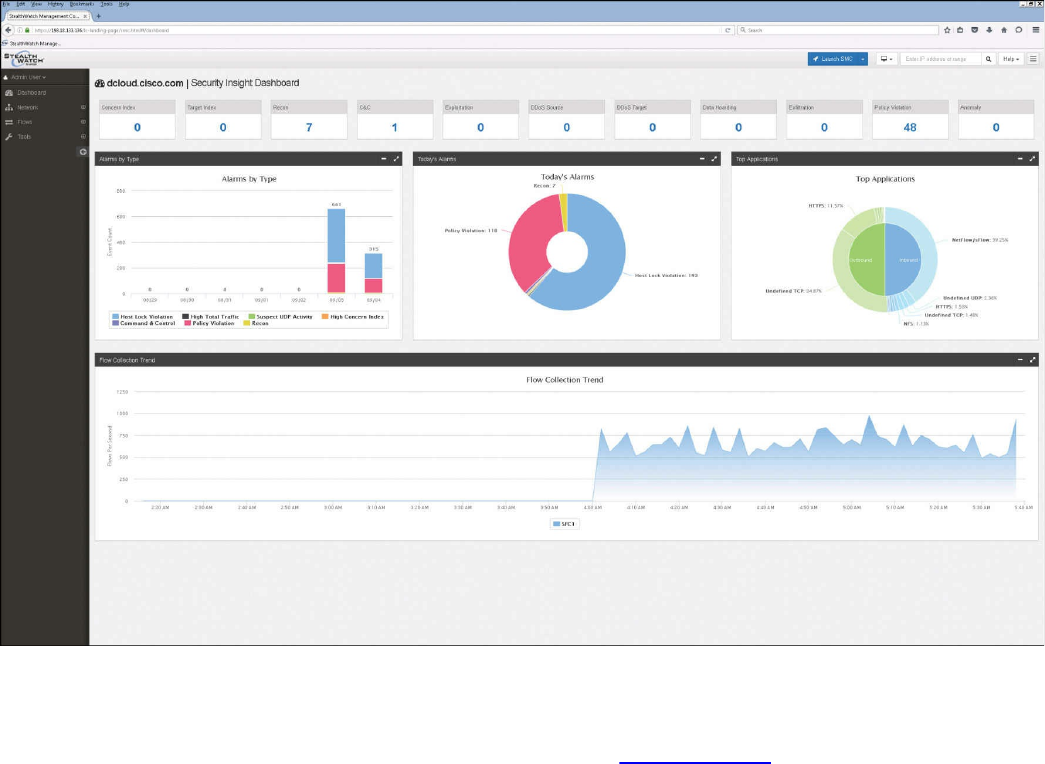

Commercial NetFlow Analysis Tools

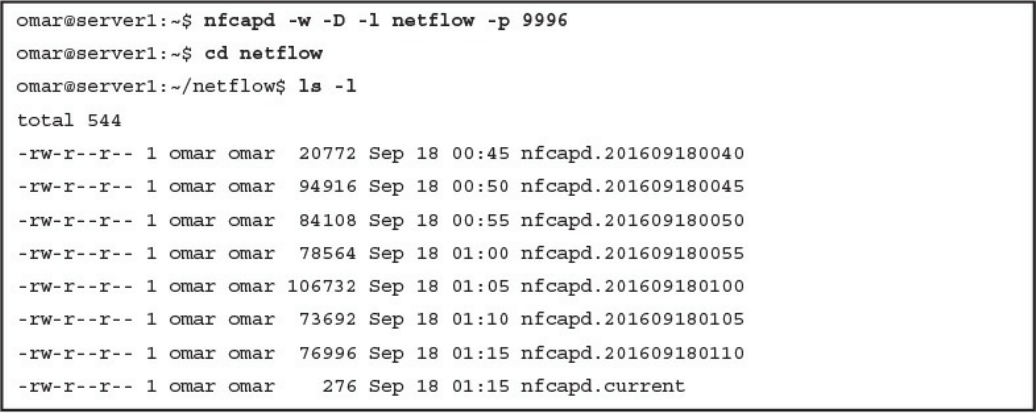

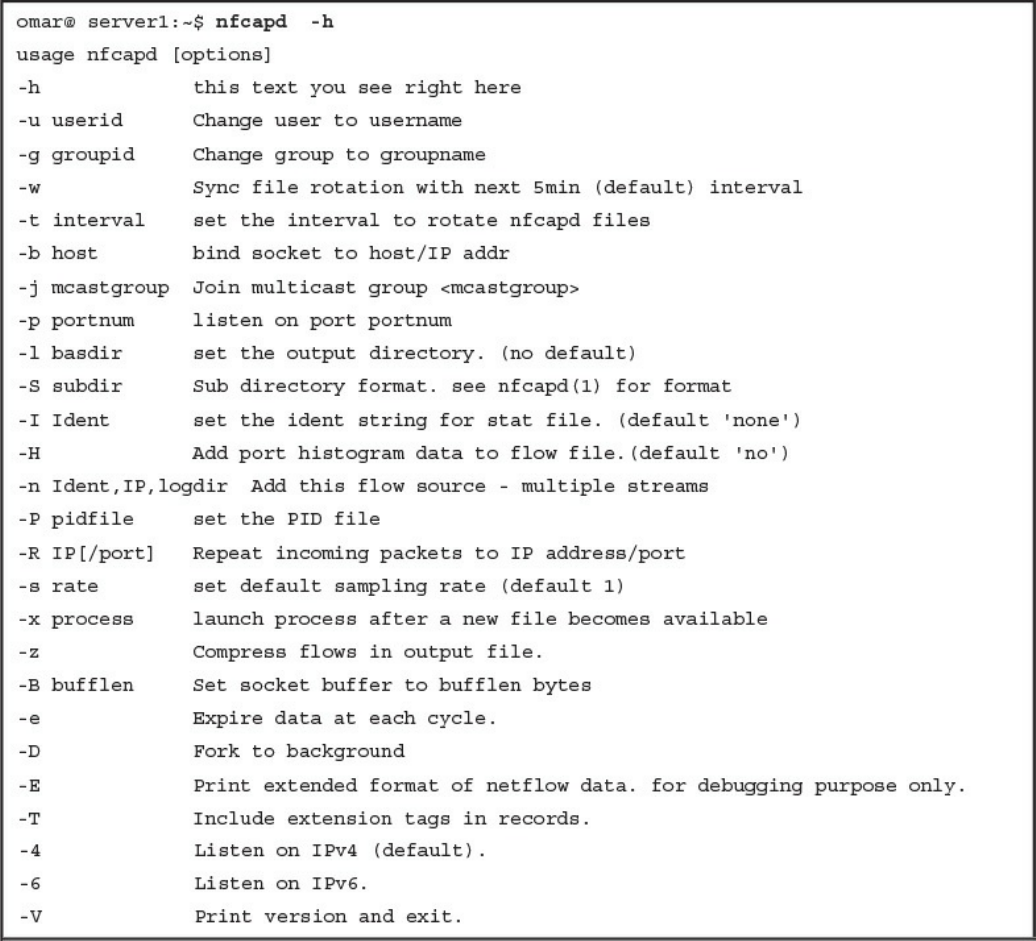

Open Source NetFlow Analysis Tools

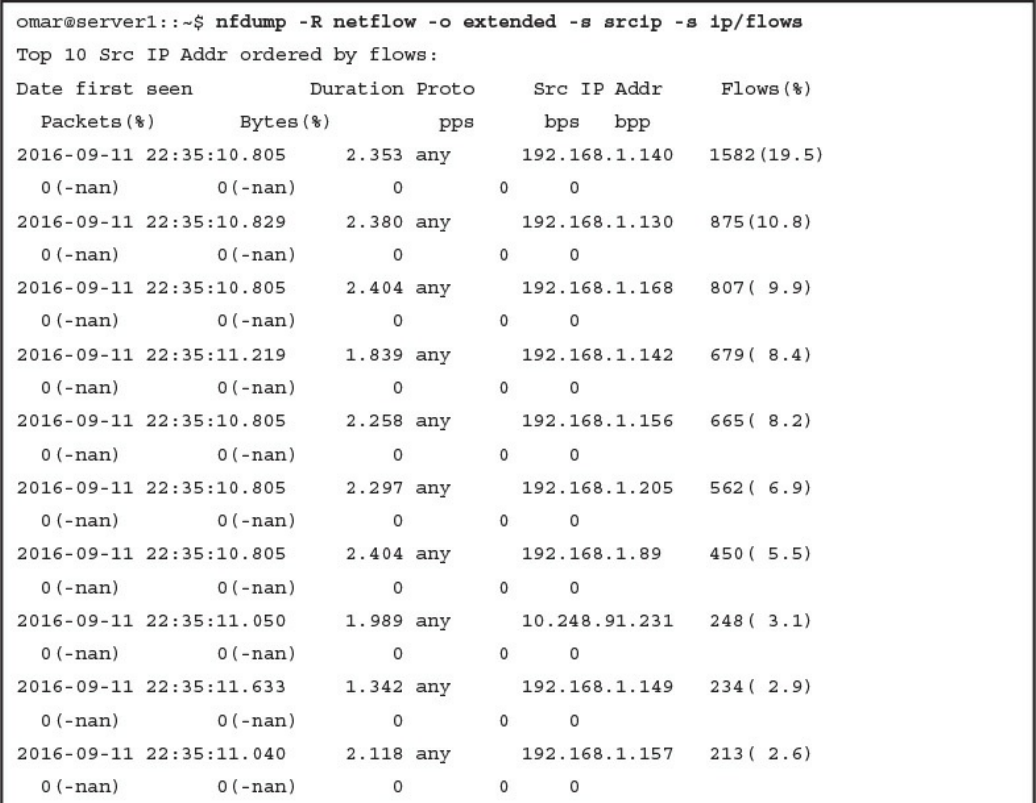

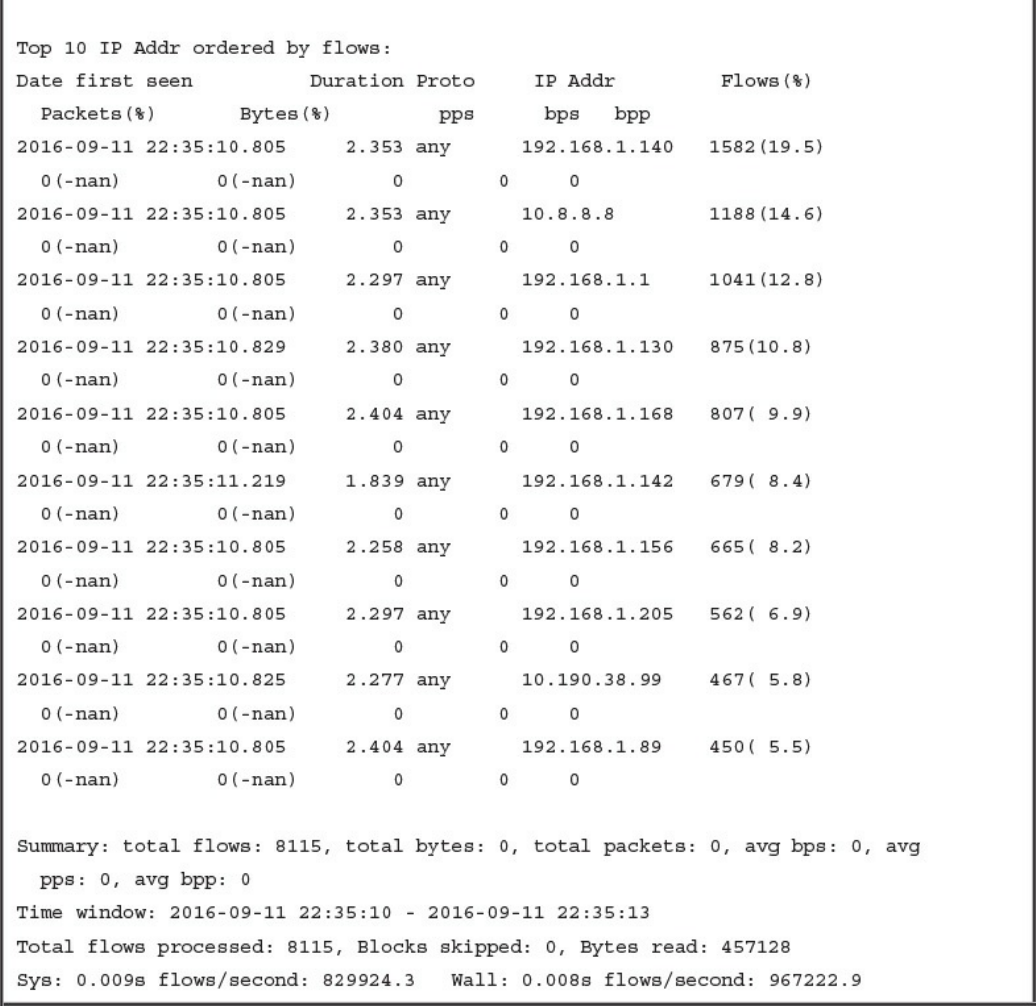

Counting, Grouping, and Mating NetFlow Records with Silk

Big Data Analytics for Cyber Security Network Telemetry

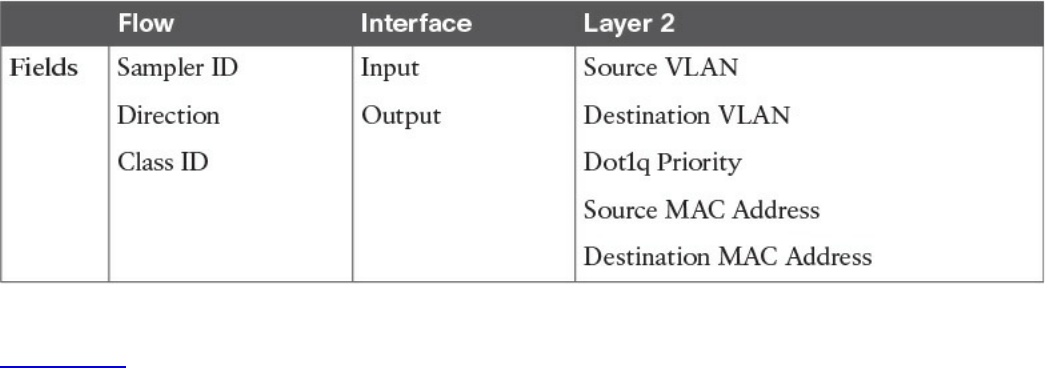

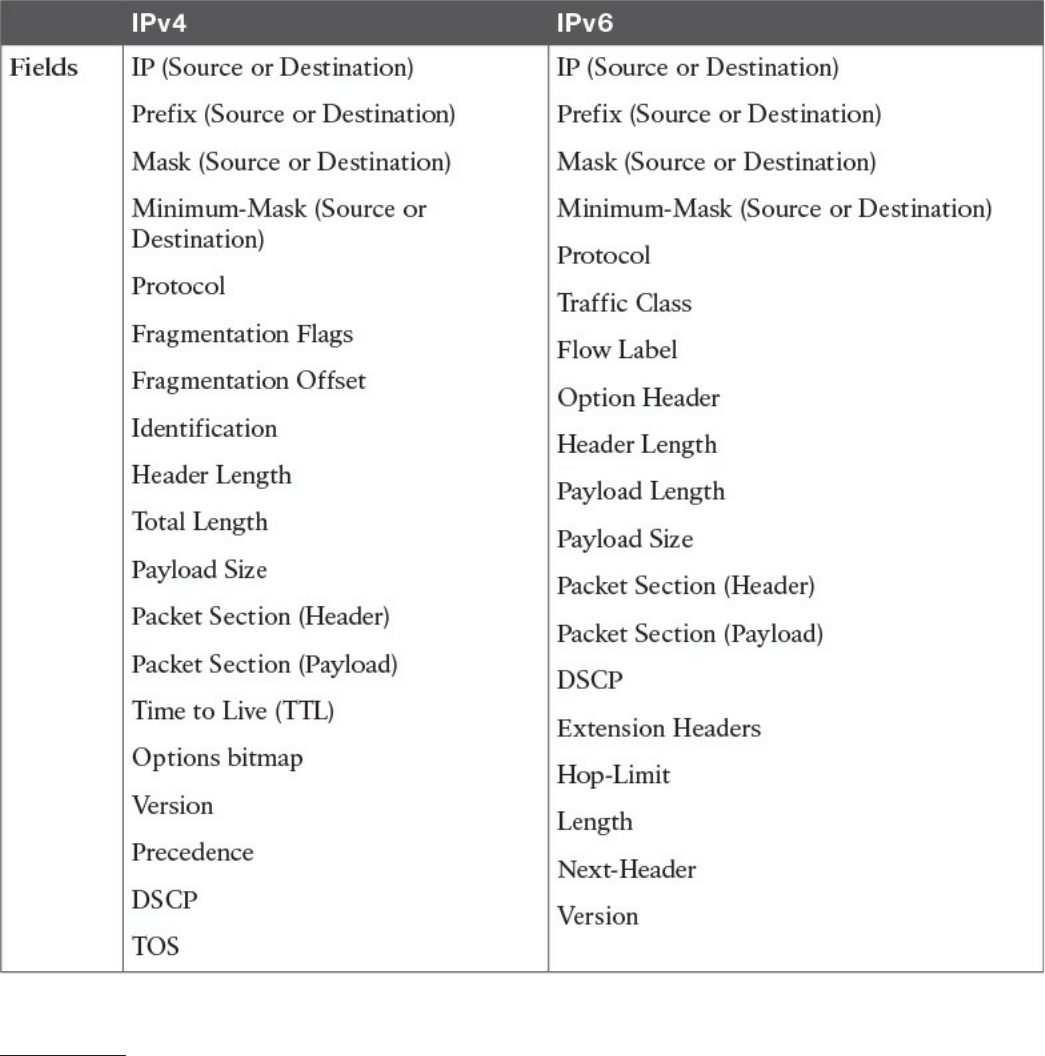

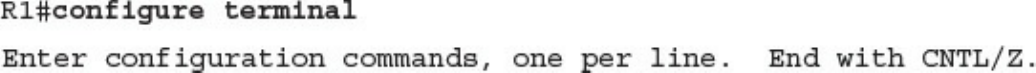

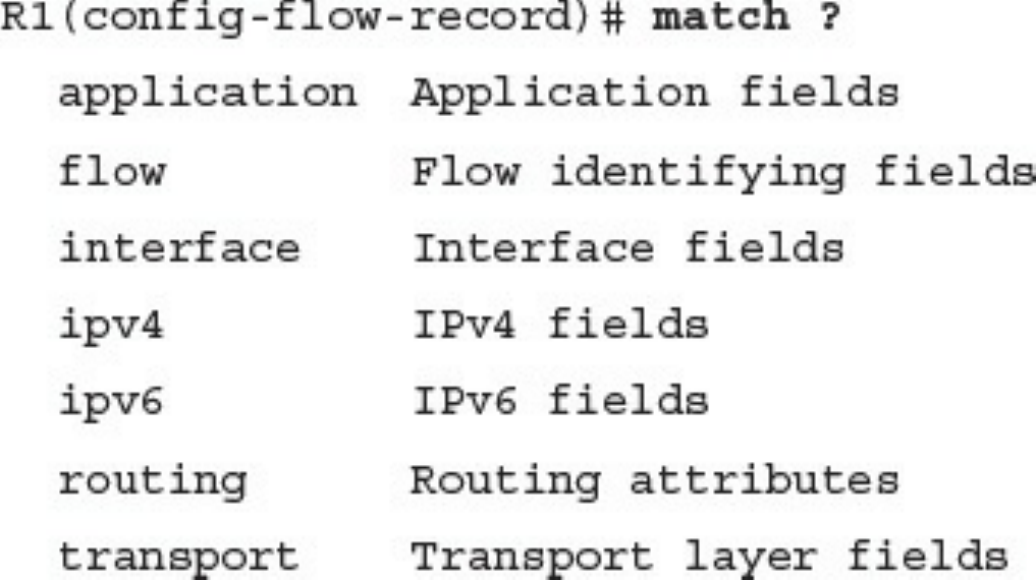

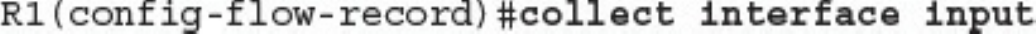

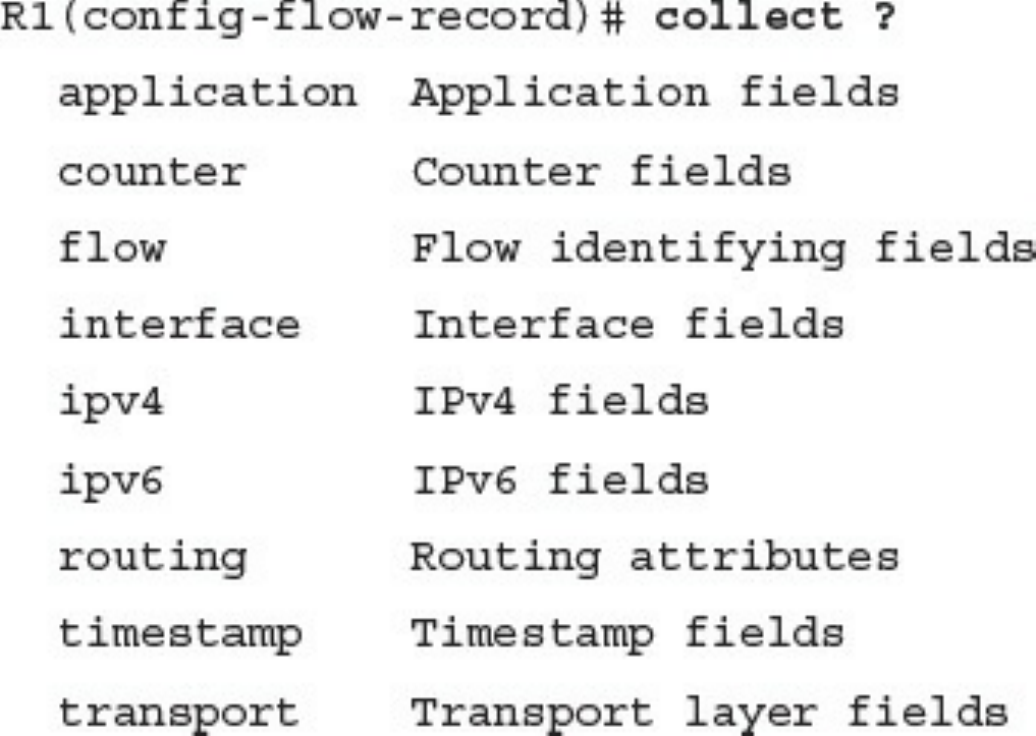

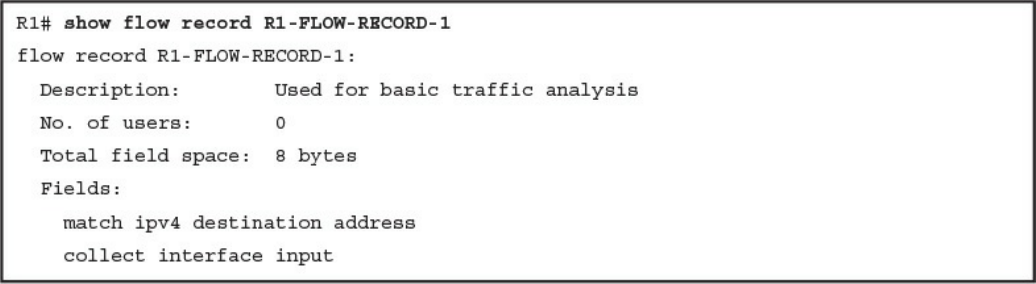

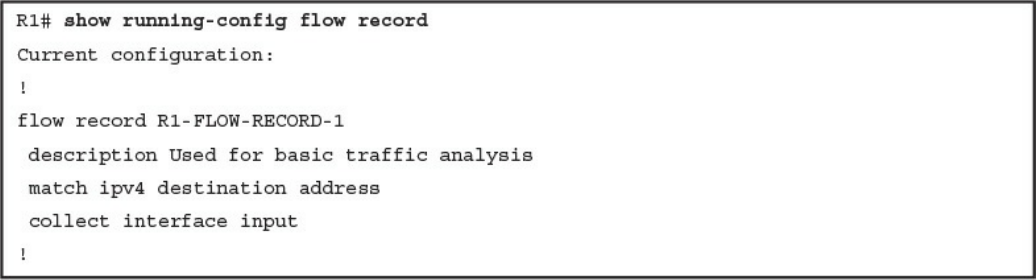

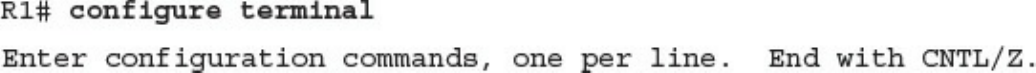

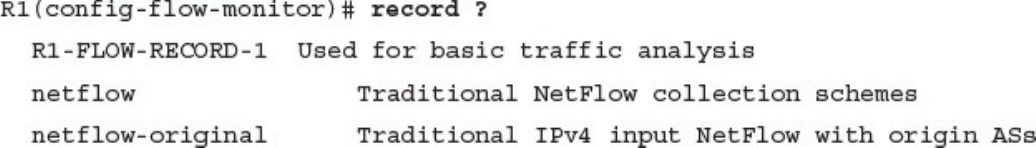

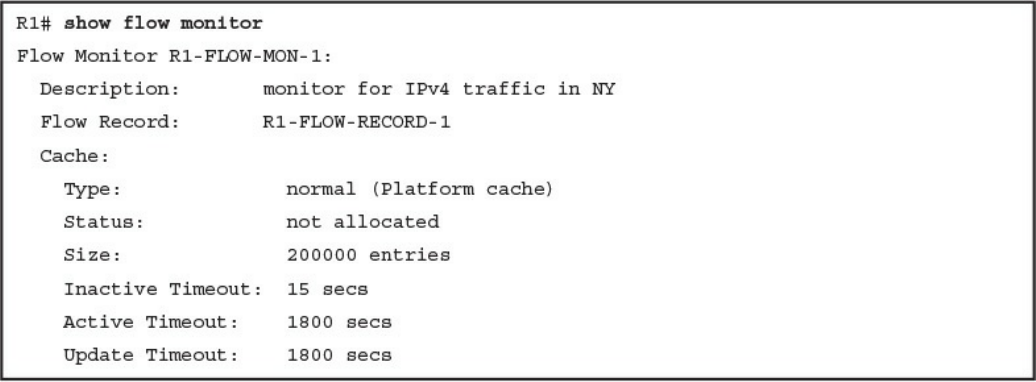

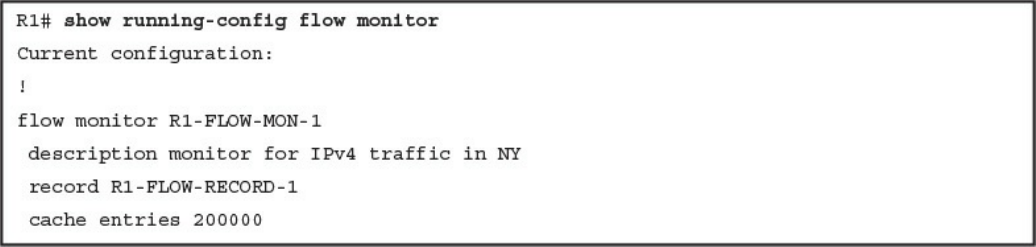

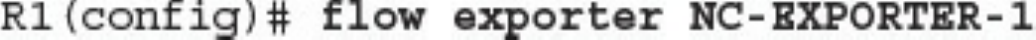

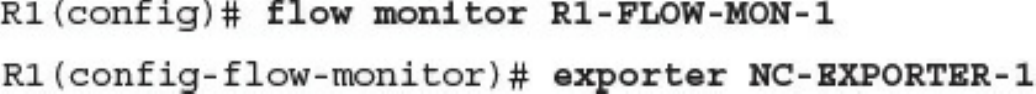

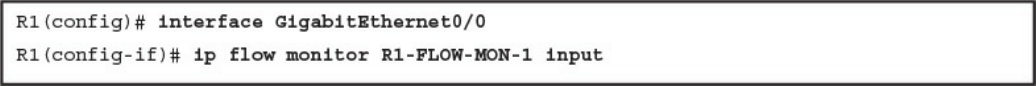

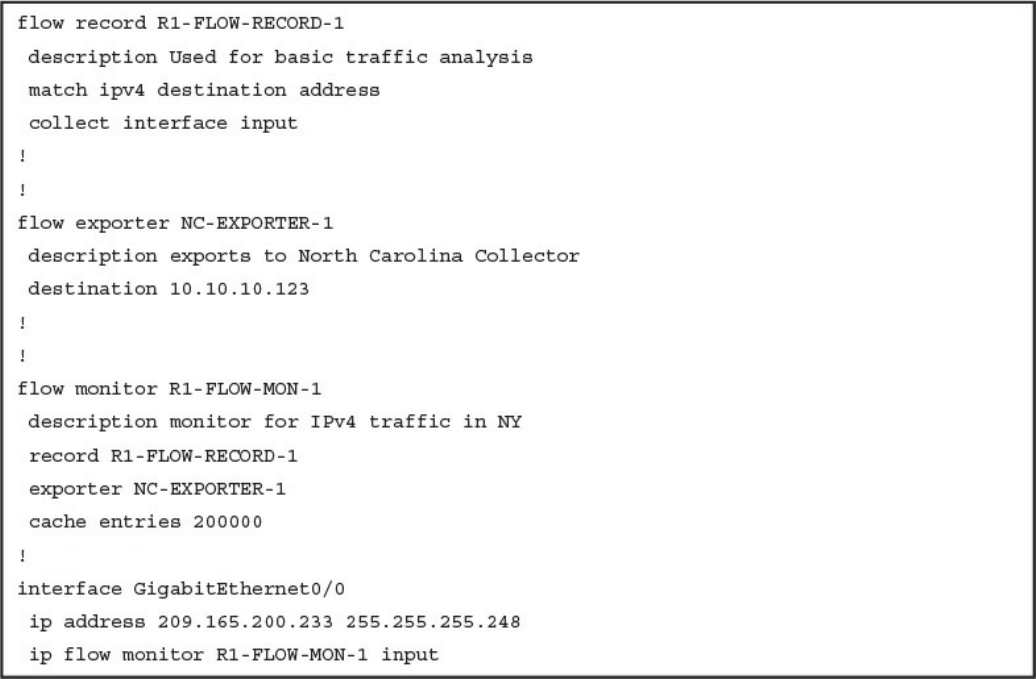

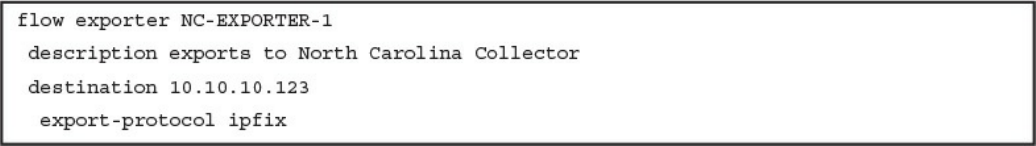

Configuring Flexible NetFlow in Cisco IOS and Cisco IOS-XE Devices

Cisco Application Visibility and Control (AVC)

Network Packet Capture

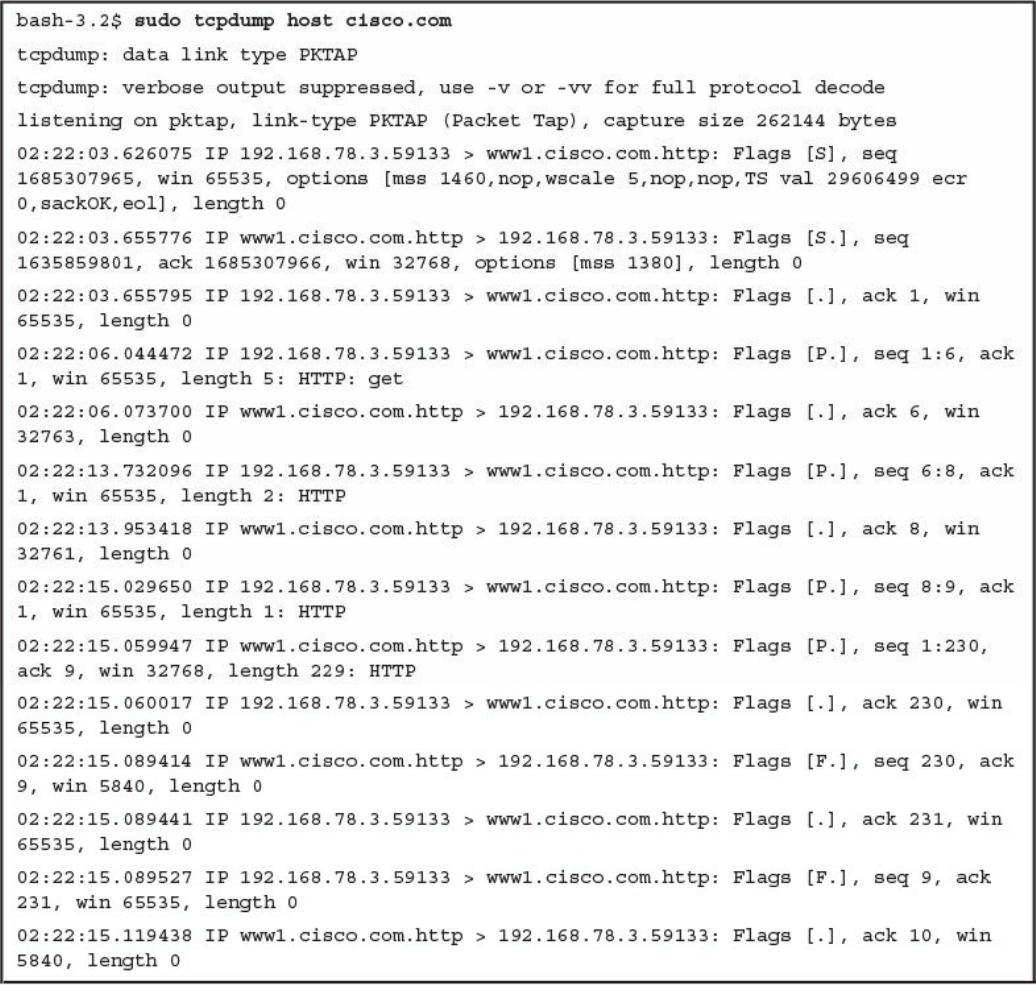

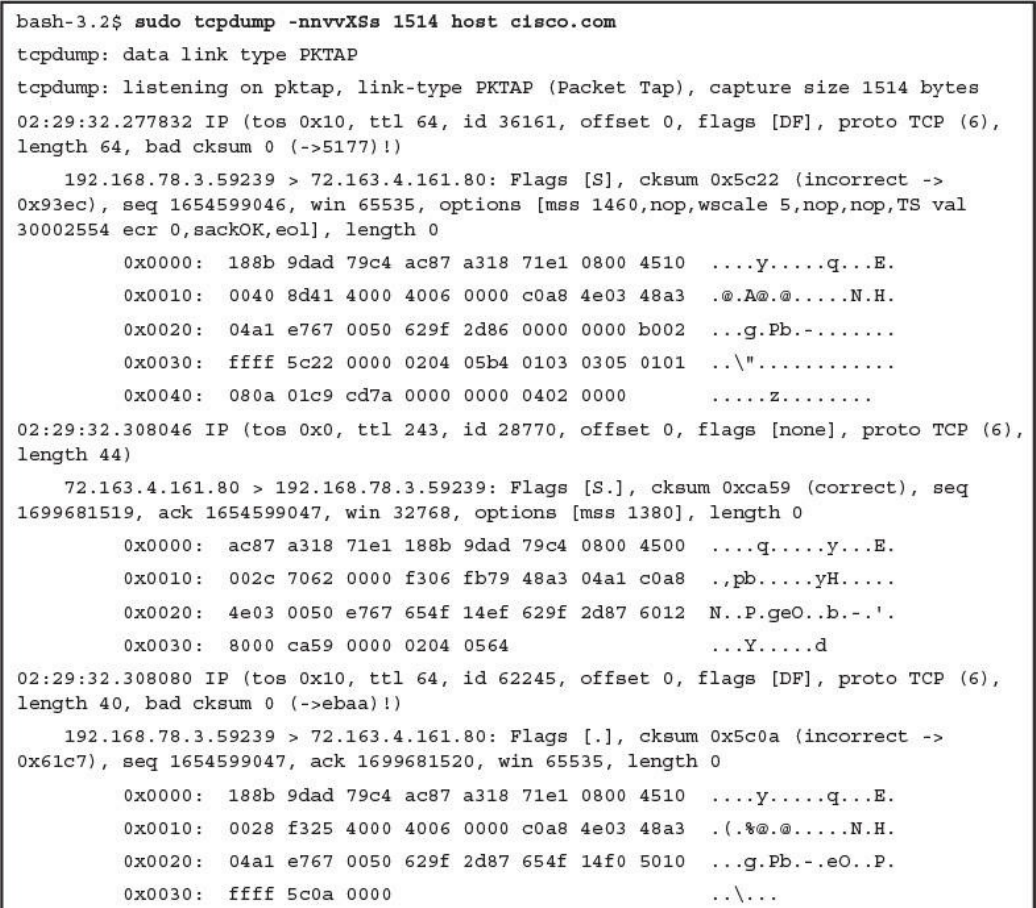

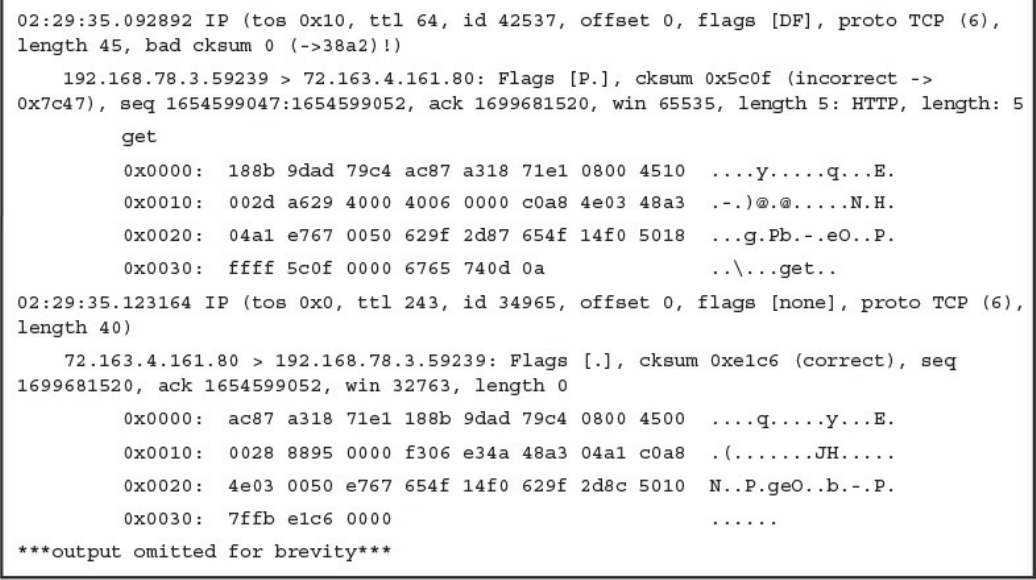

tcpdump

Wireshark

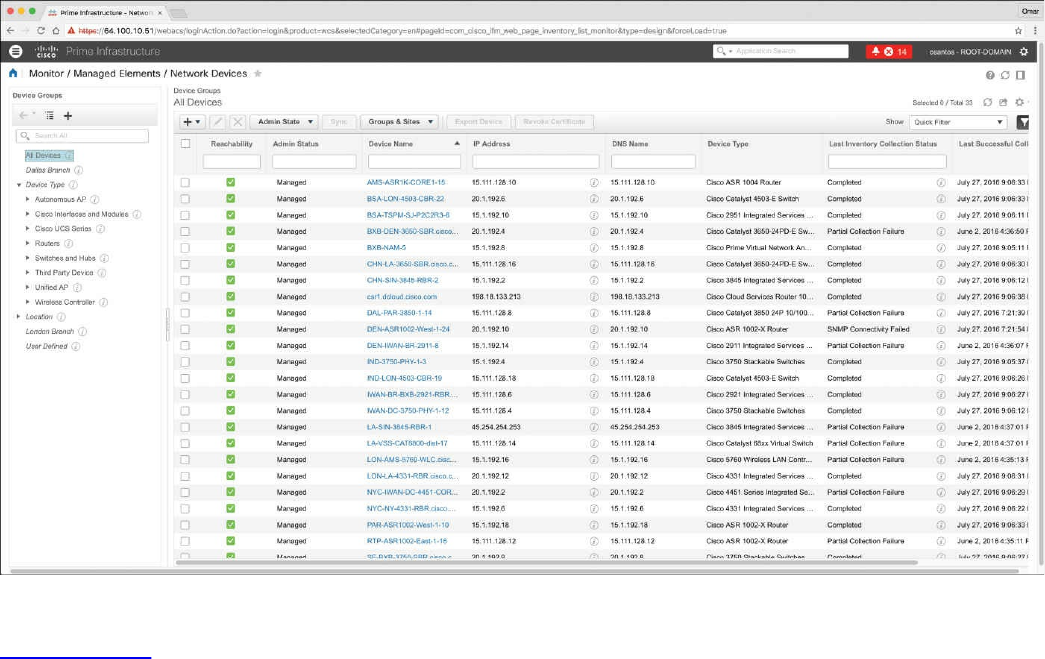

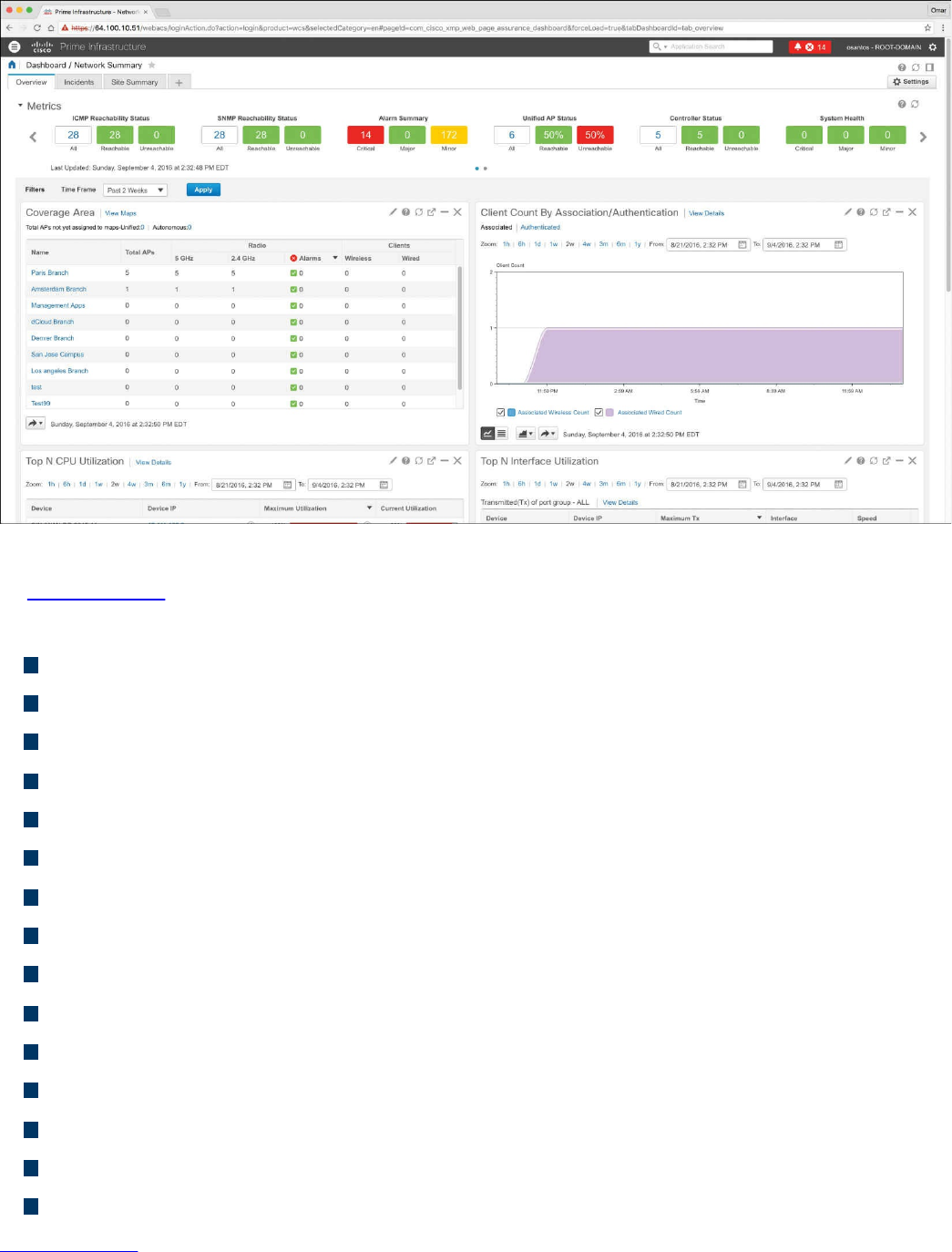

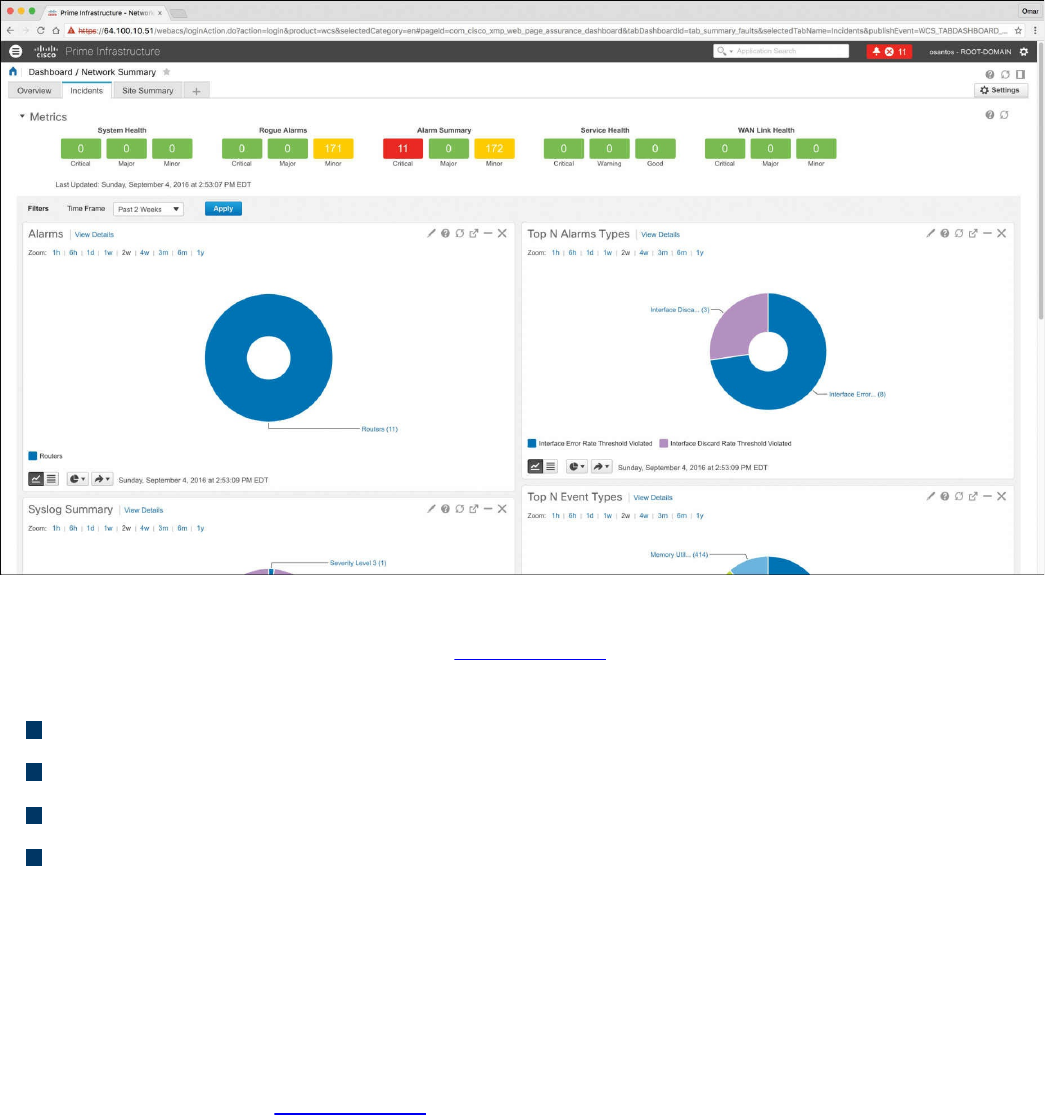

Cisco Prime Infrastructure

Host Telemetry

Logs from User Endpoints

Logs from Servers

Exam Preparation Tasks

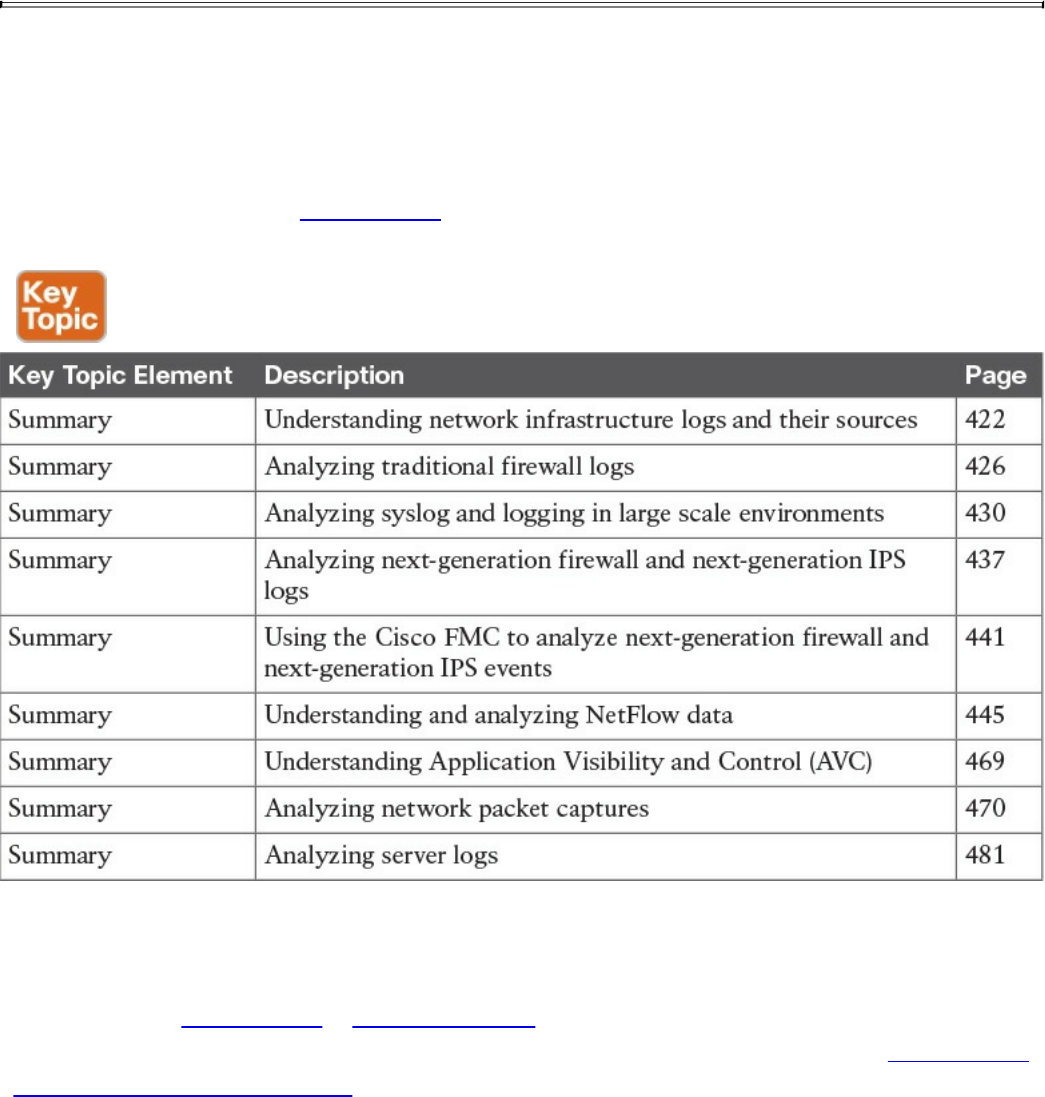

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

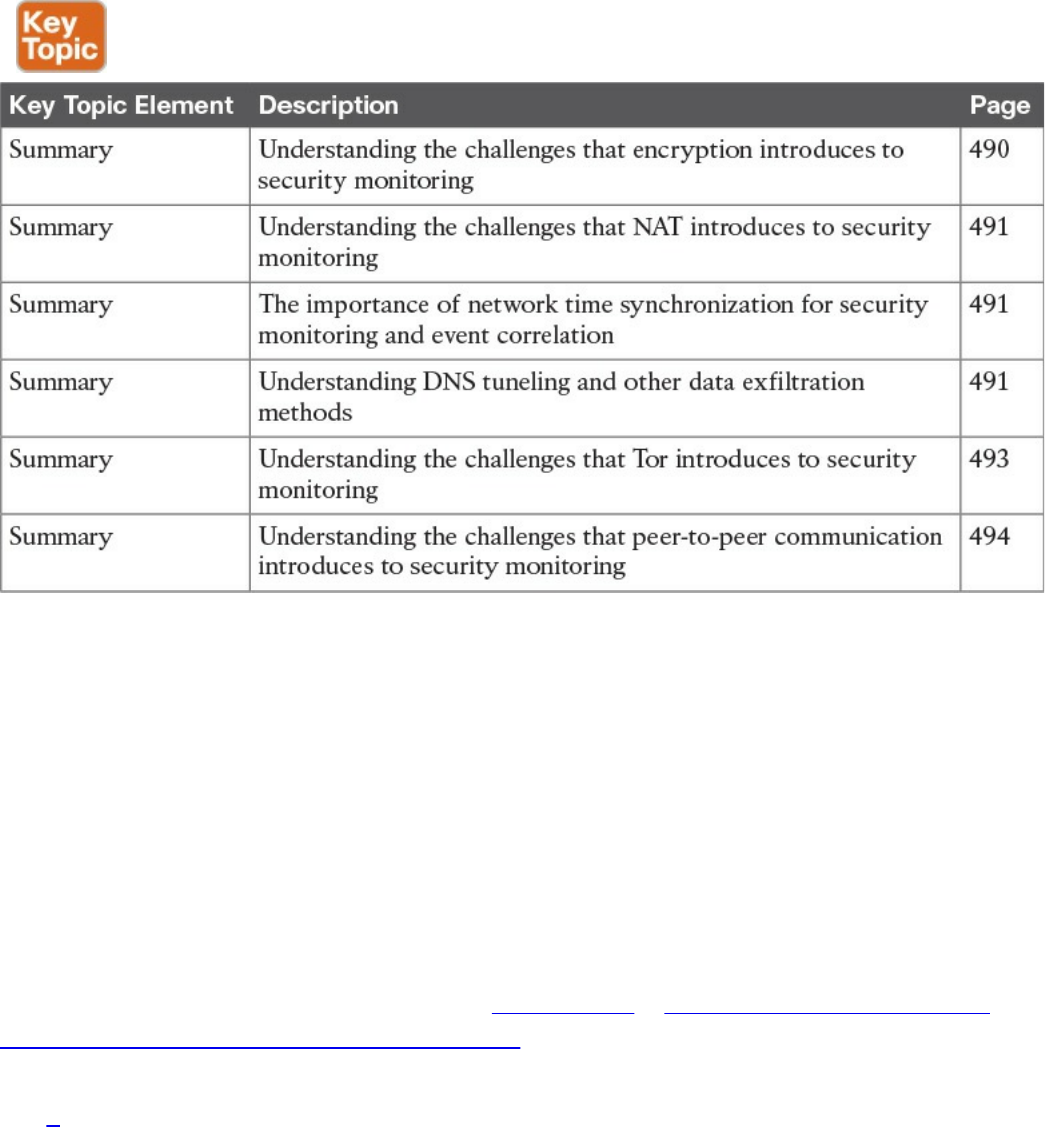

Chapter 12 Security Monitoring Operational Challenges

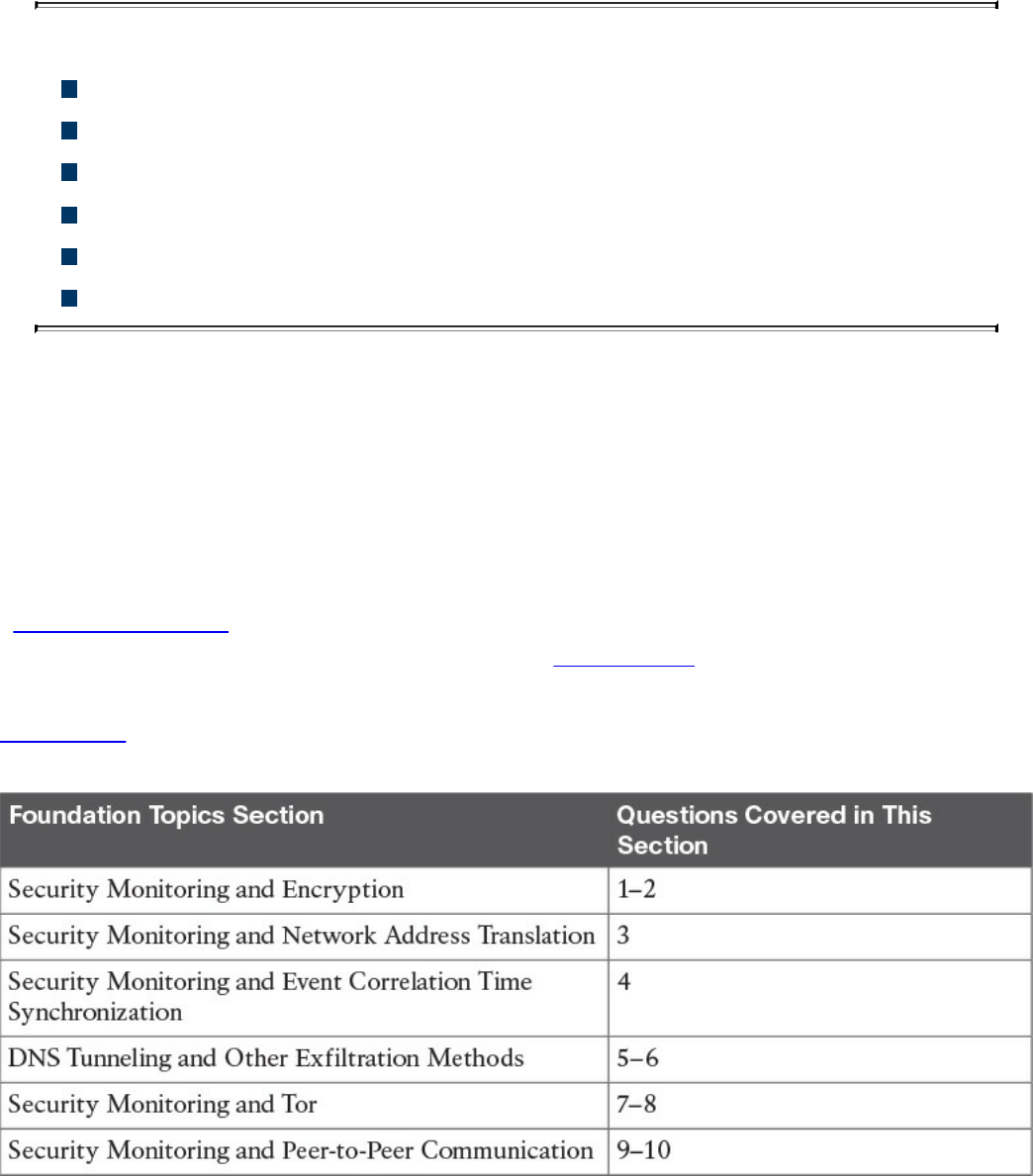

“Do I Know This Already?” Quiz

www.hellodigi.ir

Foundation Topics

Security Monitoring and Encryption

Security Monitoring and Network Address Translation

Security Monitoring and Event Correlation Time Synchronization

DNS Tunneling and Other Exfiltration Methods

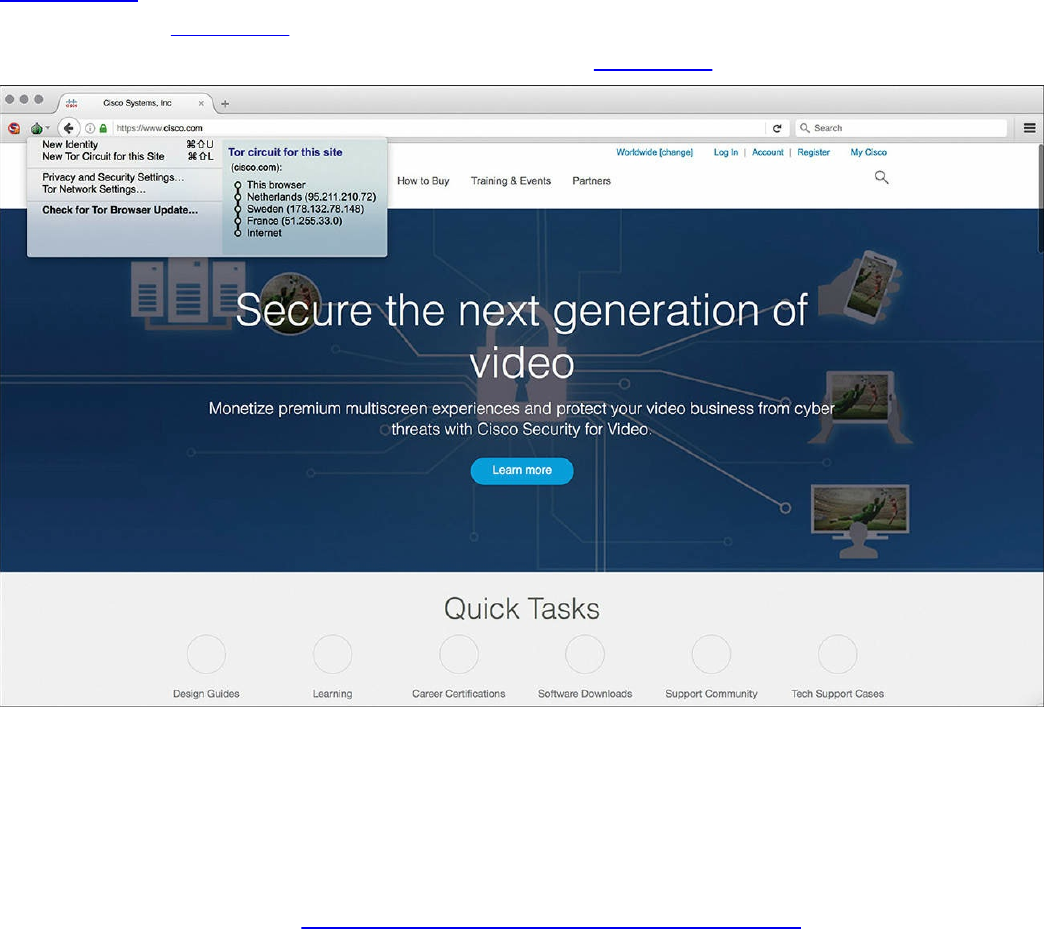

Security Monitoring and Tor

Security Monitoring and Peer-to-Peer Communication

Exam Preparation Tasks

Review All Key Topics

Define Key Terms

Q&A

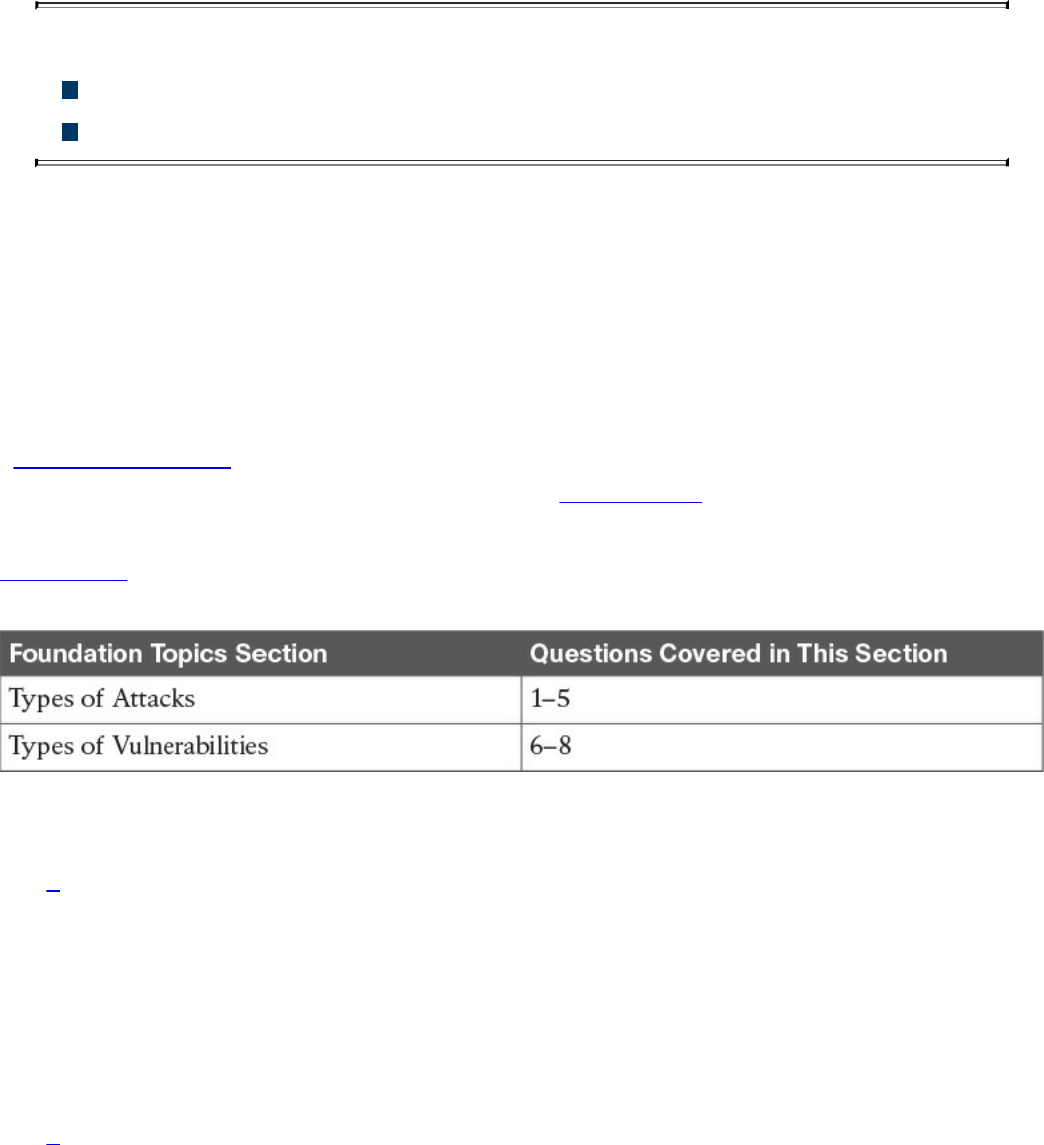

Chapter 13 Types of Attacks and Vulnerabilities

“Do I Know This Already?” Quiz

Foundation Topics

Types of Attacks

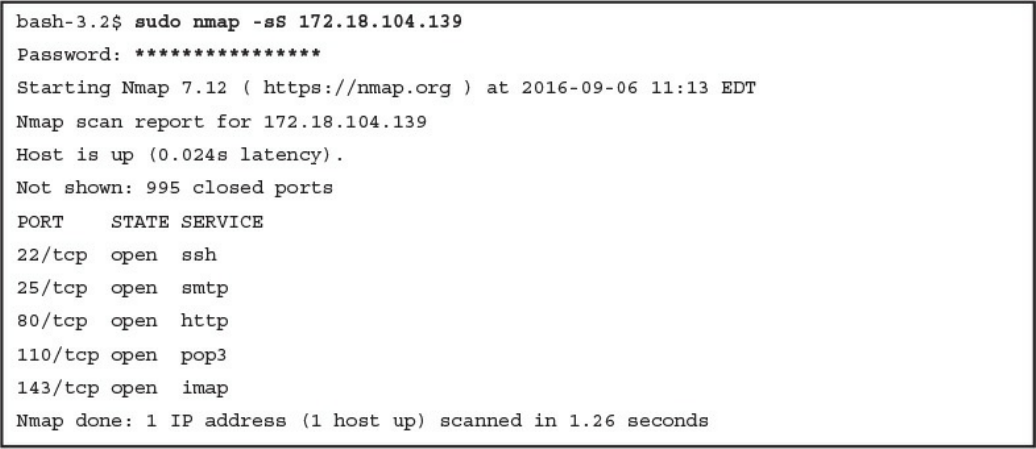

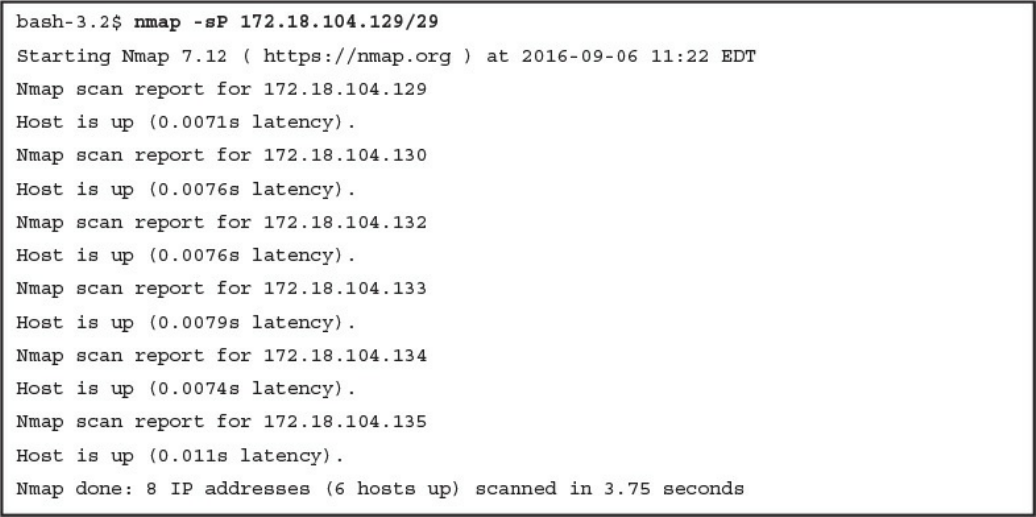

Reconnaissance Attacks

Social Engineering

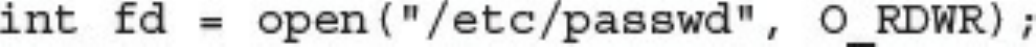

Privilege Escalation Attacks

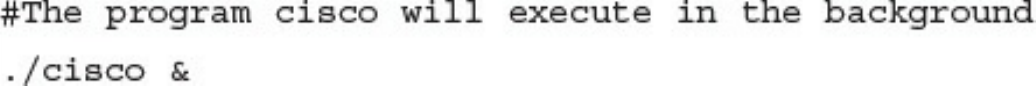

Backdoors

Code Execution

Man-in-the Middle Attacks

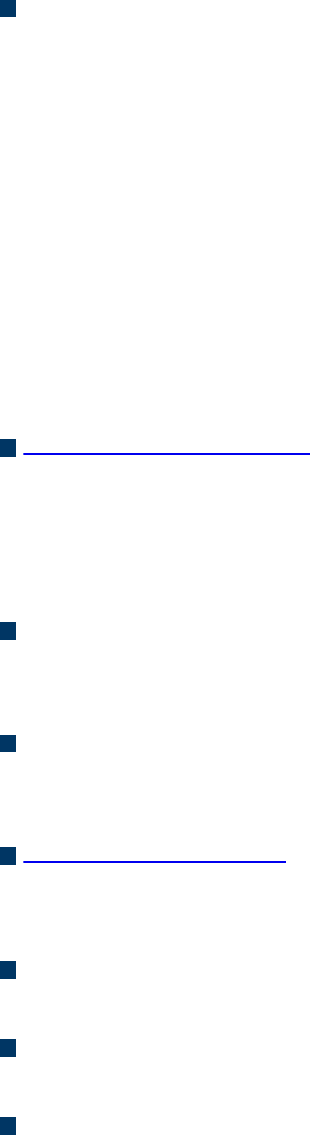

Denial-of-Service Attacks

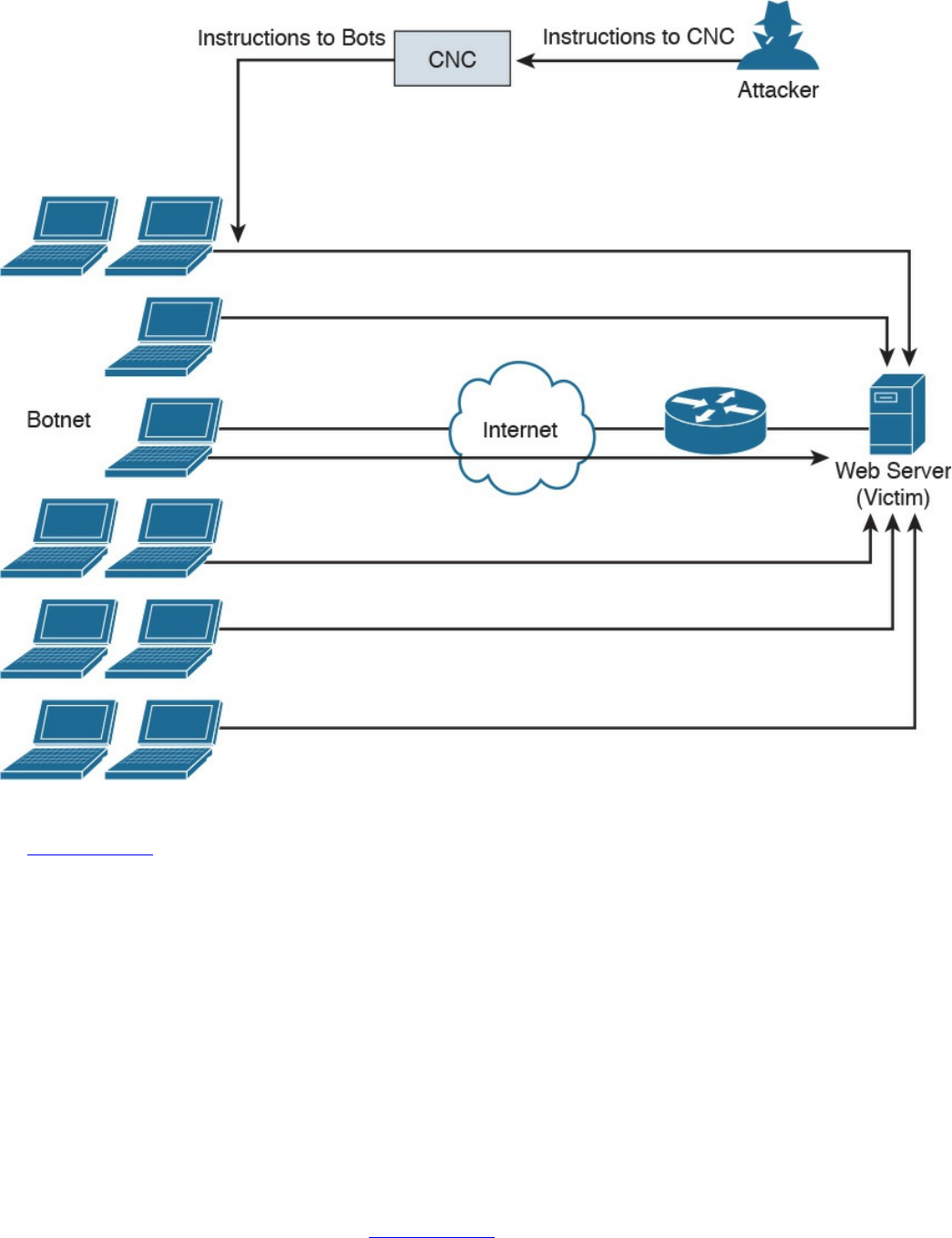

Direct DDoS

Botnets Participating in DDoS Attacks

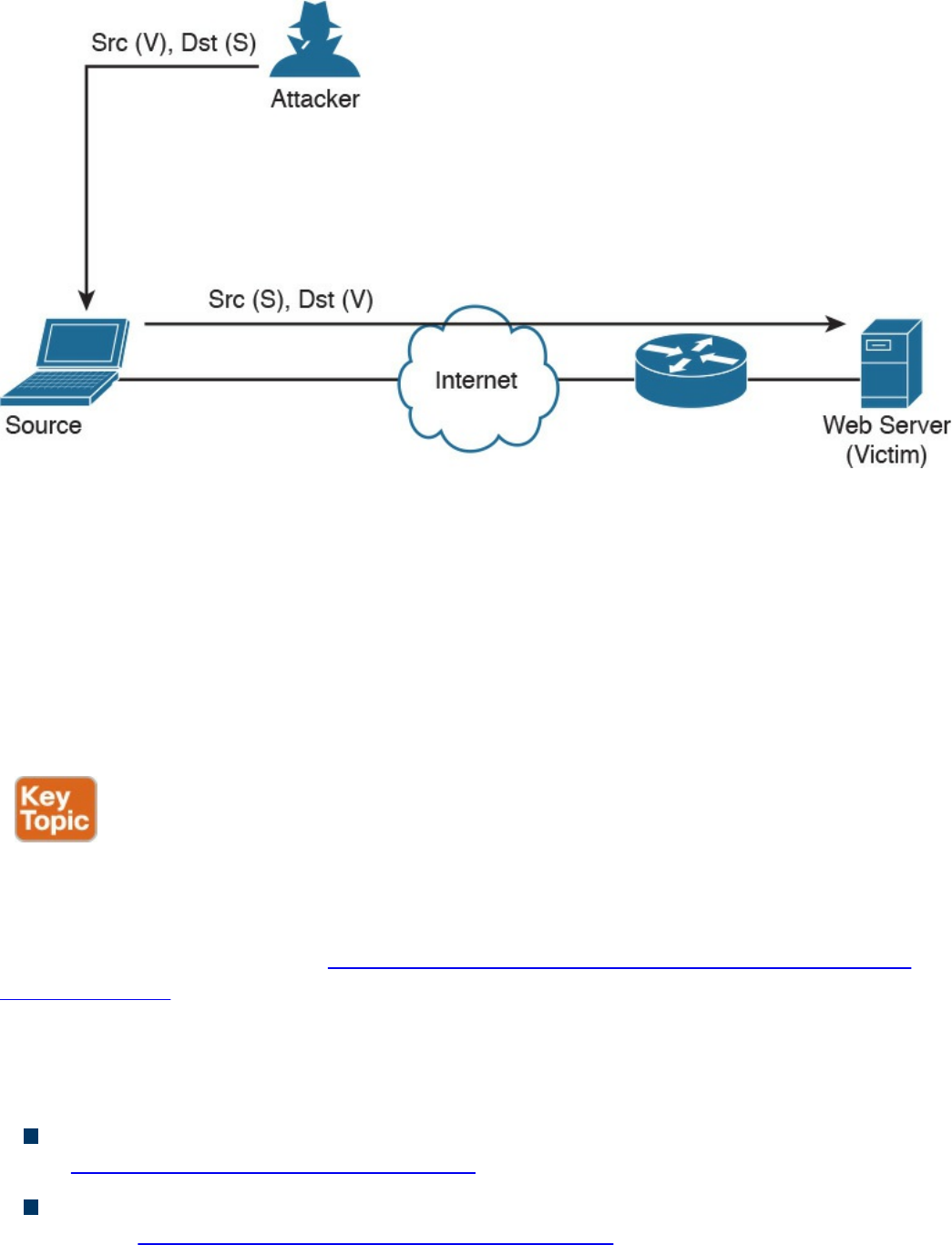

Reflected DDoS Attacks

Attack Methods for Data Exfiltration

ARP Cache Poisoning

Spoofing Attacks

Route Manipulation Attacks

Password Attacks

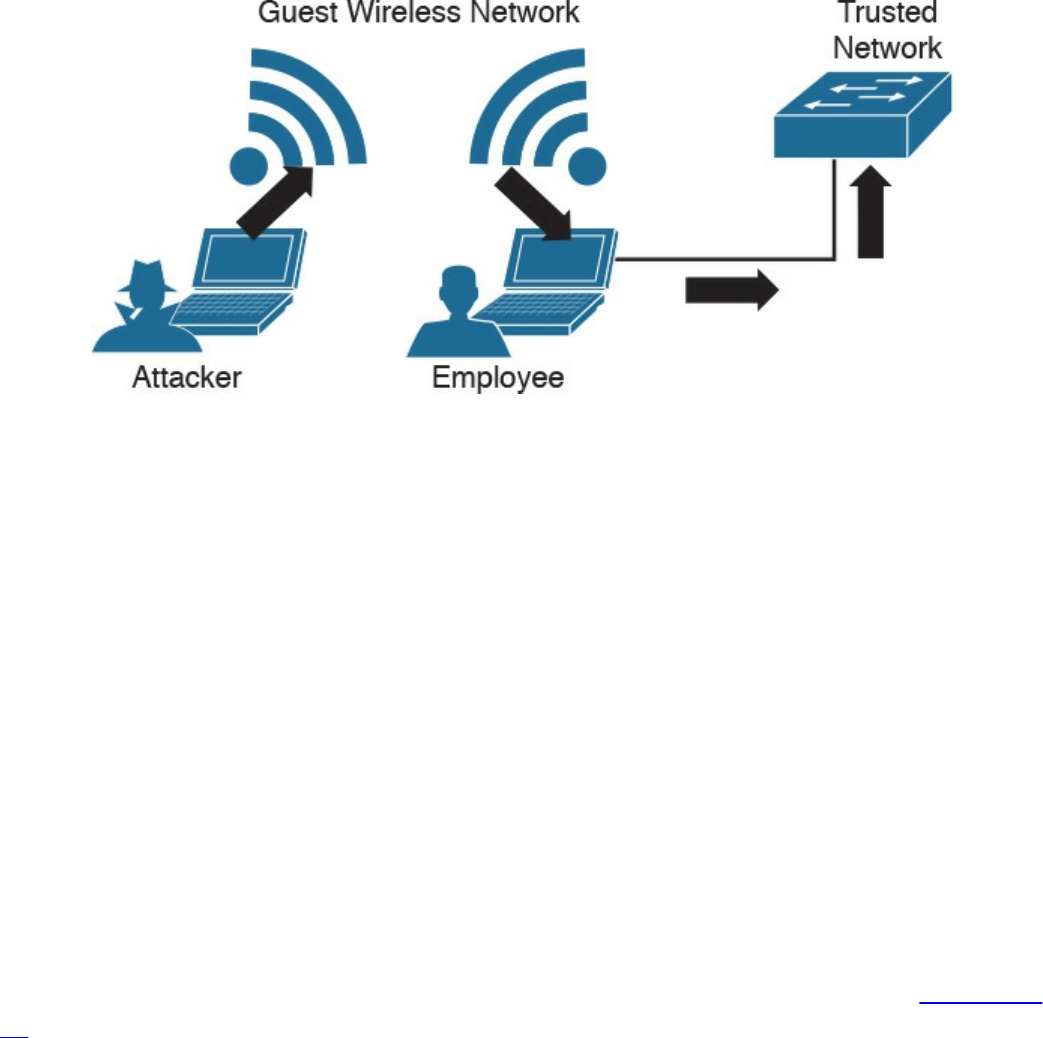

Wireless Attacks

Types of Vulnerabilities

www.hellodigi.ir

Exam Preparation Tasks

Review All Key Topics

Define Key Terms

Q&A

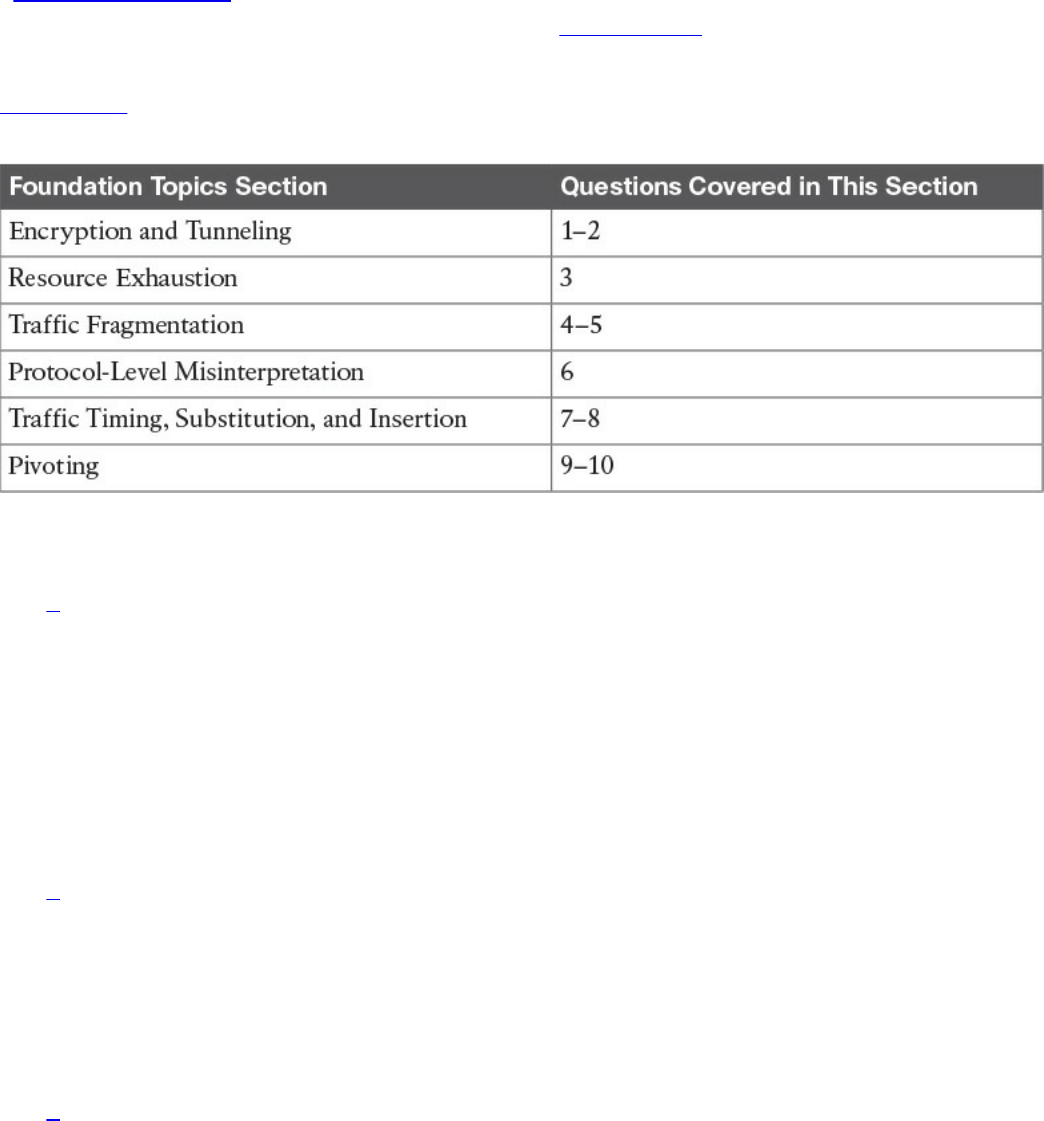

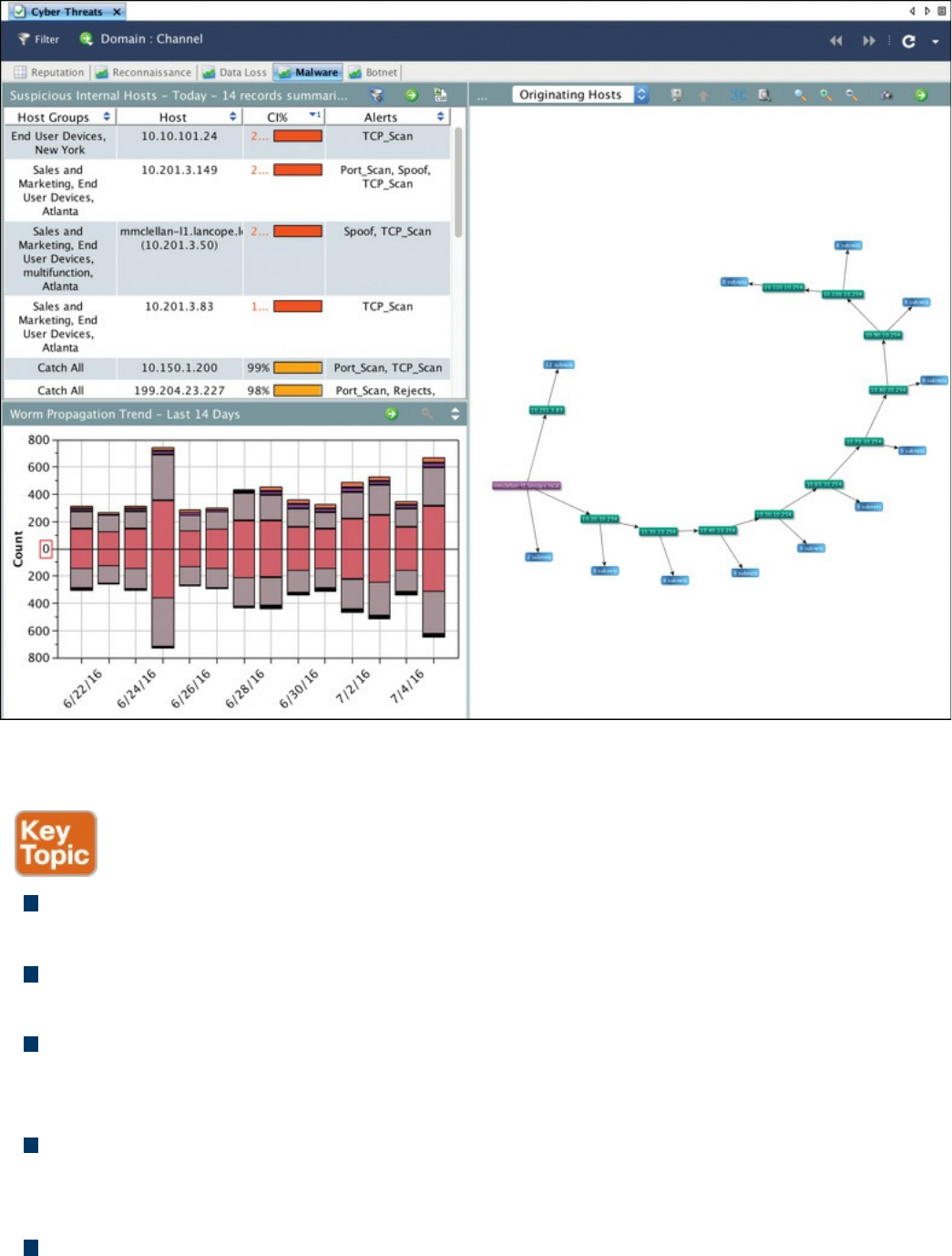

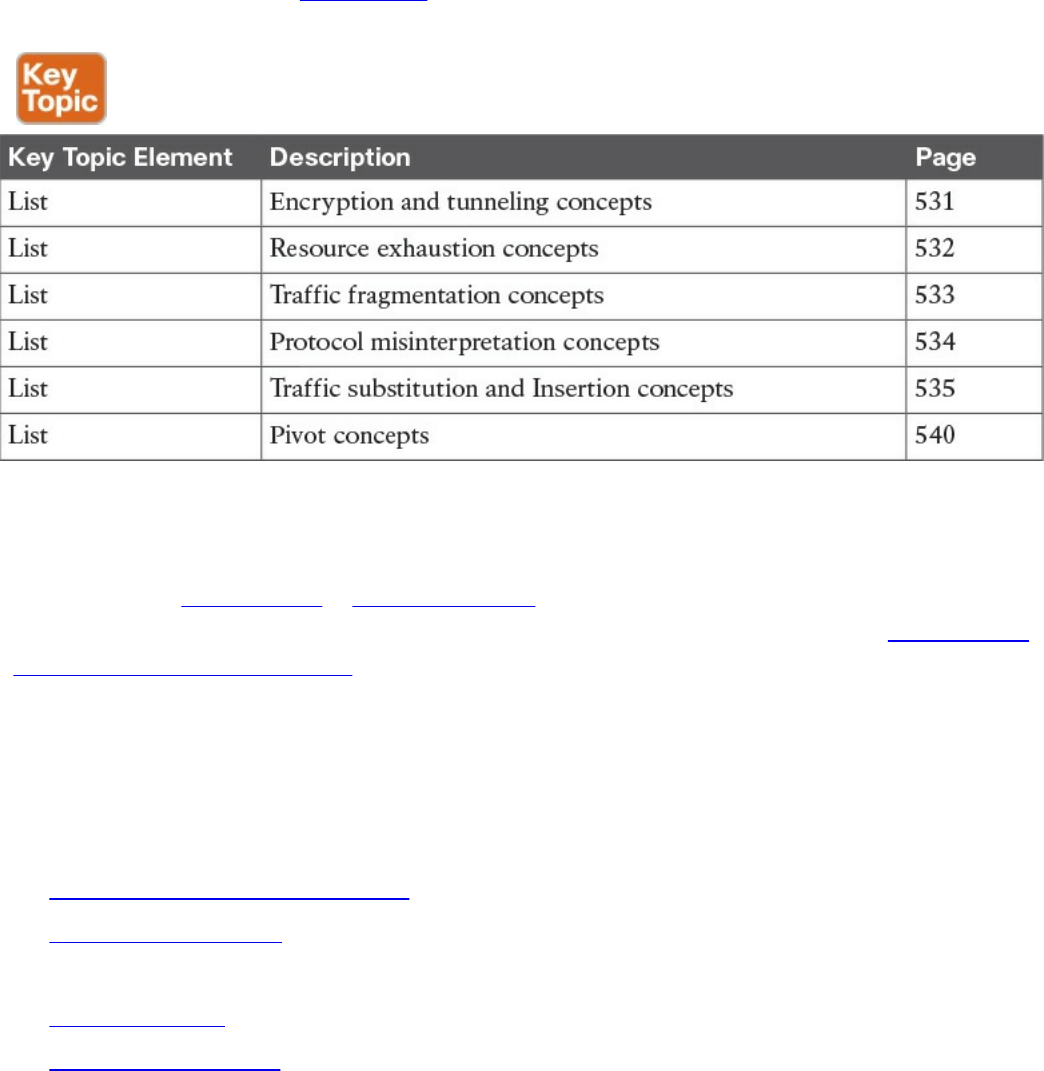

Chapter 14 Security Evasion Techniques

“Do I Know This Already?” Quiz

Foundation Topics

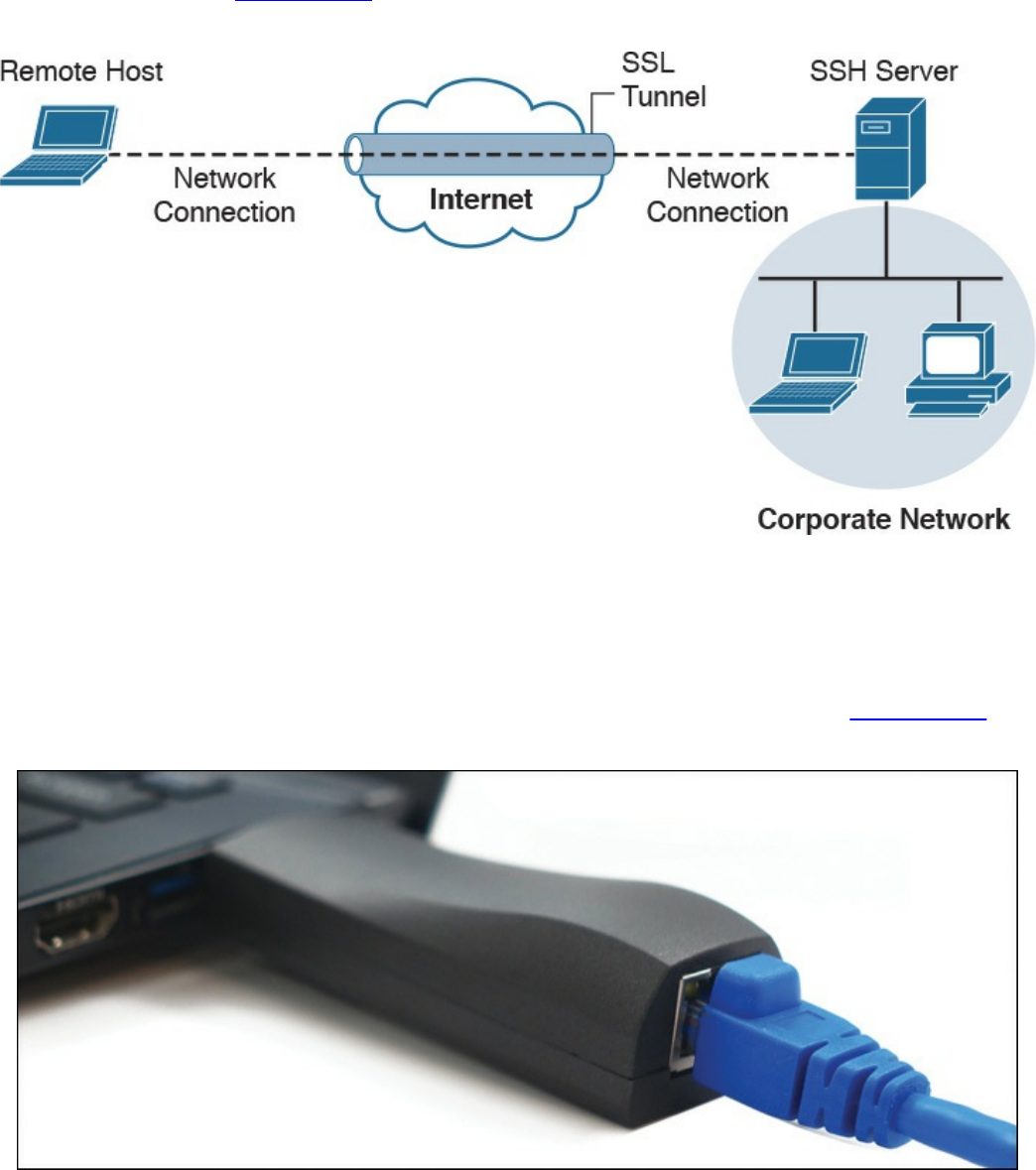

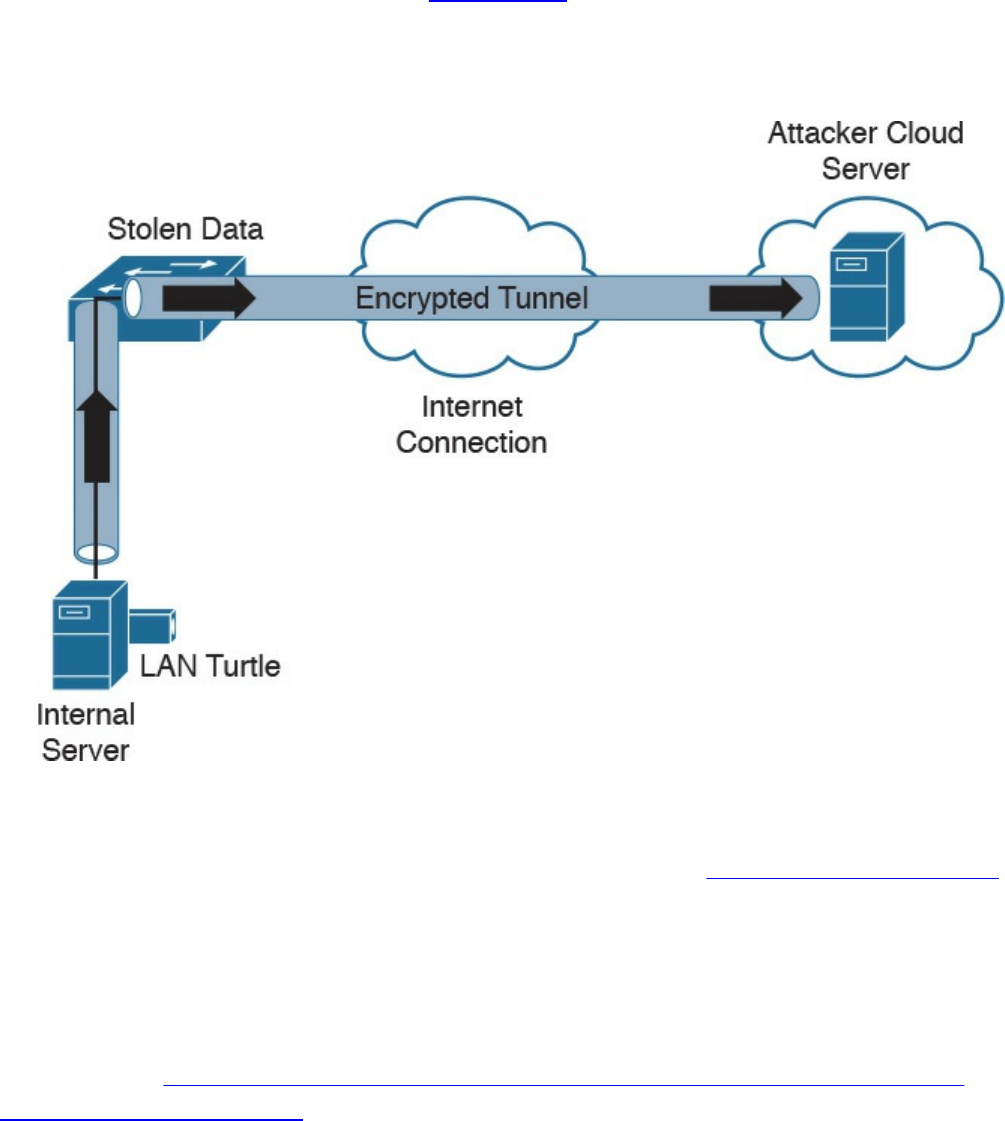

Encryption and Tunneling

Key Encryption and Tunneling Concepts

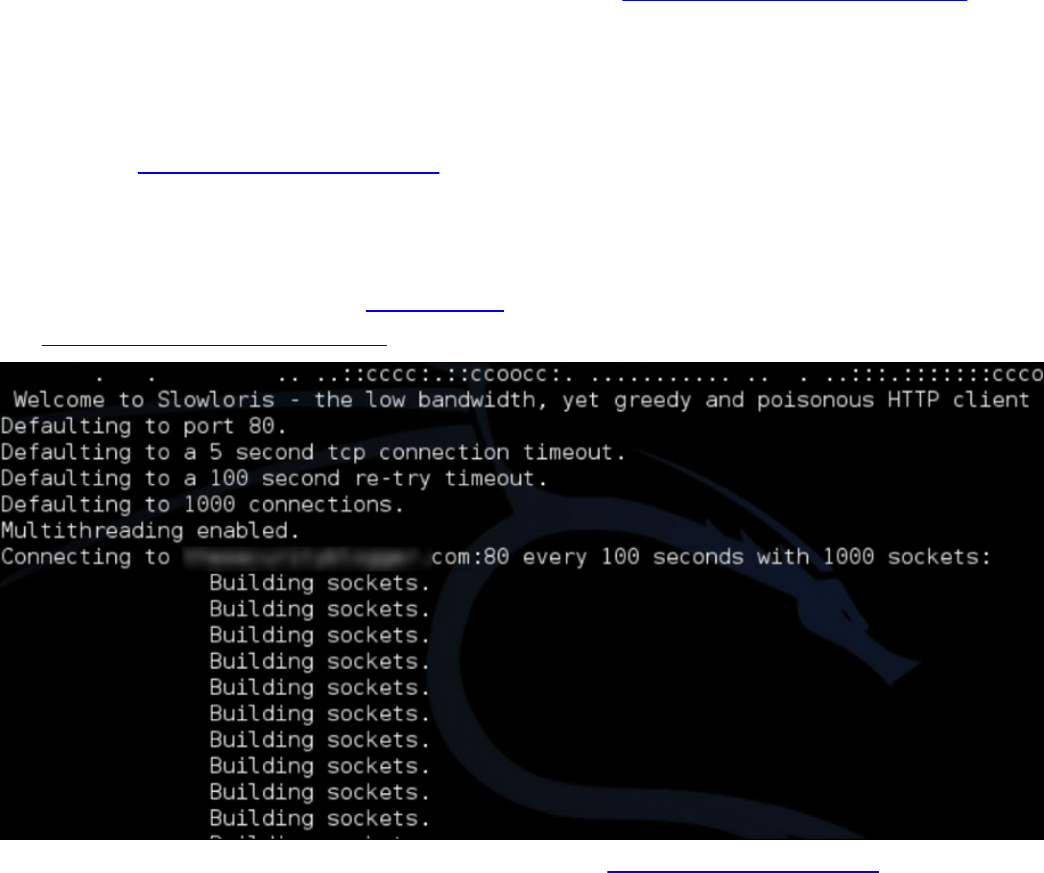

Resource Exhaustion

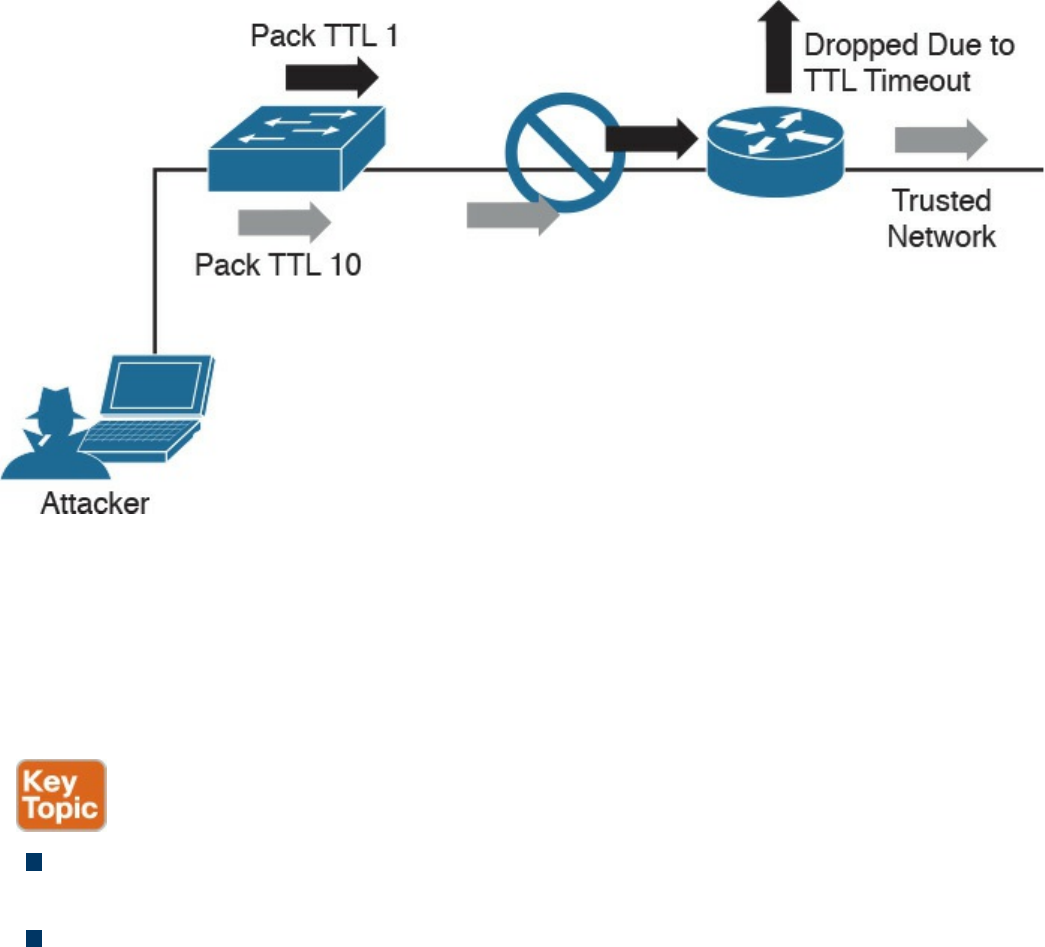

Traffic Fragmentation

Protocol-Level Misinterpretation

Traffic Timing, Substitution, and Insertion

Pivoting

Exam Preparation Tasks

Review All Key Topics

Complete Tables and Lists from Memory

Define Key Terms

Q&A

References and Further Reading

Part VI Final Preparation

Chapter 15 Final Preparation

Tools for Final Preparation

Pearson Cert Practice Test Engine and Questions on the Website

Accessing the Pearson Test Prep Software Online

Accessing the Pearson Test Prep Software Offline

Customizing Your Exams

Updating Your Exams

Premium Edition

The Cisco Learning Network

Memory Tables

www.hellodigi.ir

Chapter-Ending Review Tools

Suggested Plan for Final Review/Study

Summary

Part VII Appendixes

Appendix A Answers to the “Do I Know This Already?” Quizzes and Q&A

Questions

Glossary

Index

Elements Available on the Book Website

Appendix B Memory Tables

Appendix C Memory Tables Answer Key

Appendix D Study Planner

www.hellodigi.ir

Command Syntax Conventions

The conventions used to present command syntax in this book are the same conventions

used in the IOS Command Reference. The Command Reference describes these

conventions as follows:

Bold indicates commands and keywords that are entered literally as shown. In

actual configuration examples and output (not general command syntax), bold

indicates commands that are manually input by the user (such as a show command).

Italic indicates arguments for which you supply actual values.

Vertical bars (|) separate alternative, mutually exclusive elements.

Square brackets ([ ]) indicate an optional element.

Braces ({ }) indicate a required choice.

Braces within brackets ([{ }]) indicate a required choice within an optional

element.

www.hellodigi.ir

Introduction

Congratulations! If you are reading this, you have in your possession a powerful tool

that can help you to:

Improve your awareness and knowledge of cyber security fundamentals

Increase your skill level related to the implementation of that security

Prepare for the CCNA Cyber Ops SECFND certification exam

Whether you are preparing for the CCNA Cyber Ops certification or just changing

careers to cyber security, this book will help you gain the knowledge you need to get

started and prepared. When writing this book, we did so with you in mind, and together

we will discover the critical ingredients that make up the recipe for a secure network

and how to succeed in cyber security operations. By focusing on covering the objectives

for the CCNA Cyber Ops SECFND exam and integrating that with real-world best

practices and examples, we created this content with the intention of being your

personal tour guides as we take you on a journey through the world of network security.

The CCNA Cyber Ops: Understanding Cisco Cybersecurity Fundamentals (SECFND)

210-250 exam is required for the CCNA Cyber Ops certification. This book covers all

the topics listed in Cisco’s exam blueprint, and each chapter includes key topics and

preparation tasks to assist you in mastering this information. Reviewing tables and

practicing test questions will help you practice your knowledge in all subject areas.

About the 210-250 CCNA Cyber Ops SECFND Exam

The CCNA Cyber Ops: Understanding Cisco Cybersecurity Fundamentals (SECFND)

210-250 exam is the first of the two required exams to achieve the CCNA Cyber Ops

certification and is aligned with the job role of associate-level security operations

center (SOC) security analyst. The SECFND exam tests candidates’ understanding of

cyber security’s basic principles, foundational knowledge, and core skills needed to

grasp the more advanced associate-level materials in the second required exam:

Implementing Cisco Cybersecurity Operations (SECOPS).

The CCNA Cyber Ops: Understanding Cisco Cybersecurity Fundamentals (SECFND)

210-250 exam is a computer-based test that has 55 to 60 questions and a 90-minute time

limit. Because all exam information is managed by Cisco Systems and is therefore

subject to change, candidates should continually monitor the Cisco Systems site for

exam updates at http://www.cisco.com/c/en/us/training-events/training-

certifications/exams/current-list/secfnd.html.

You can take the exam at Pearson VUE testing centers. You can register with VUE at

www.vue.com/cisco.

www.hellodigi.ir

www.hellodigi.ir

www.hellodigi.ir

www.hellodigi.ir

www.hellodigi.ir

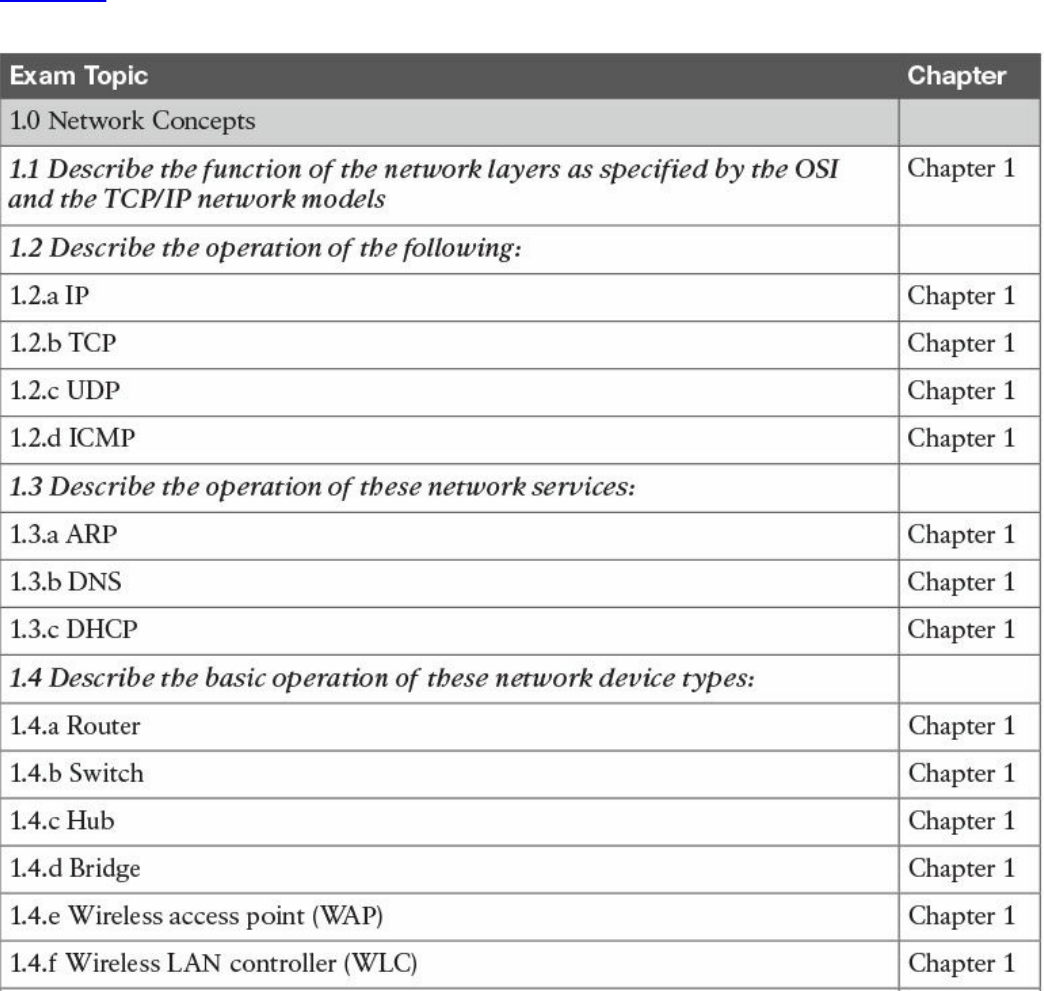

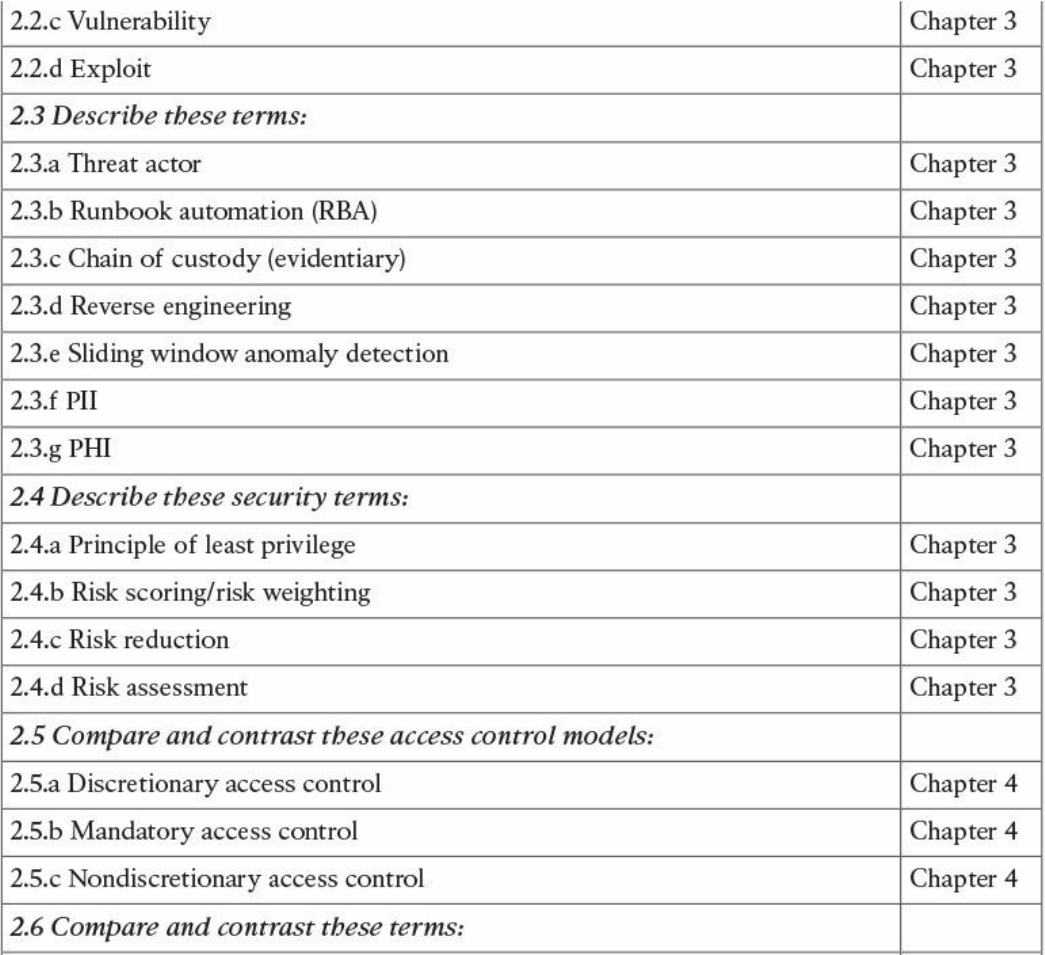

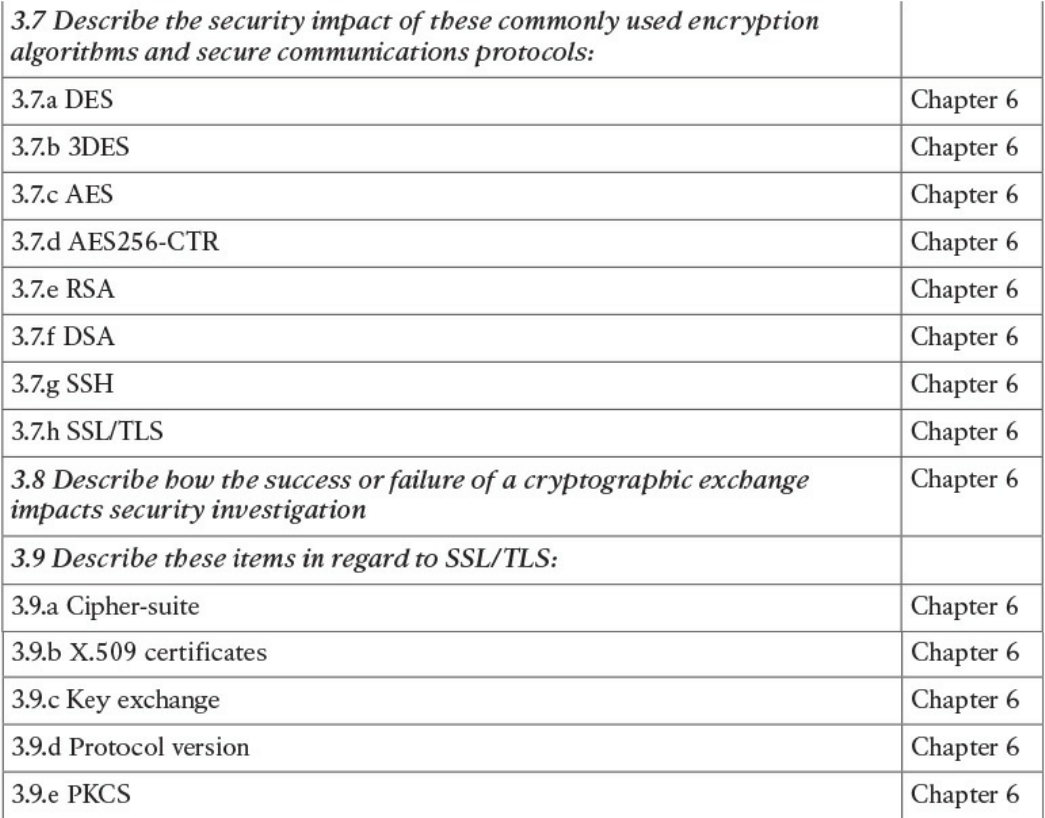

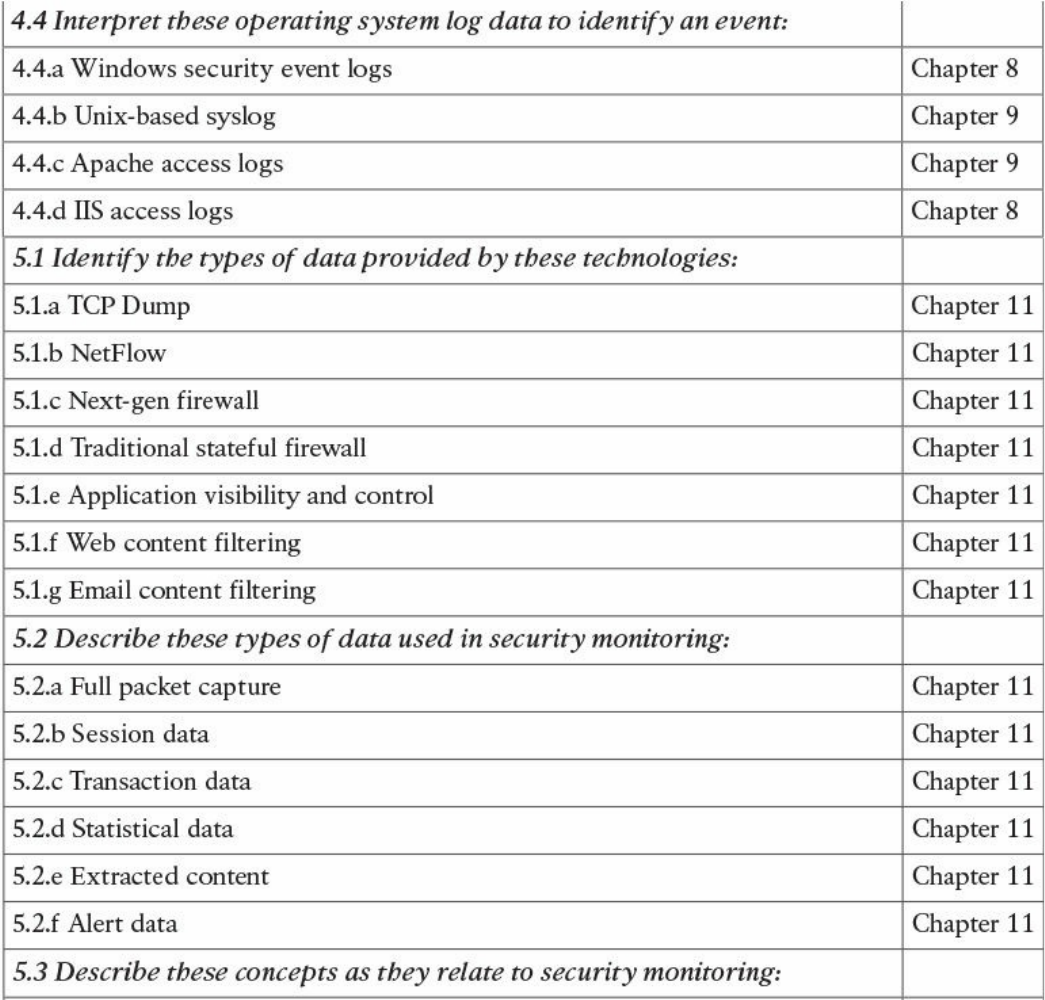

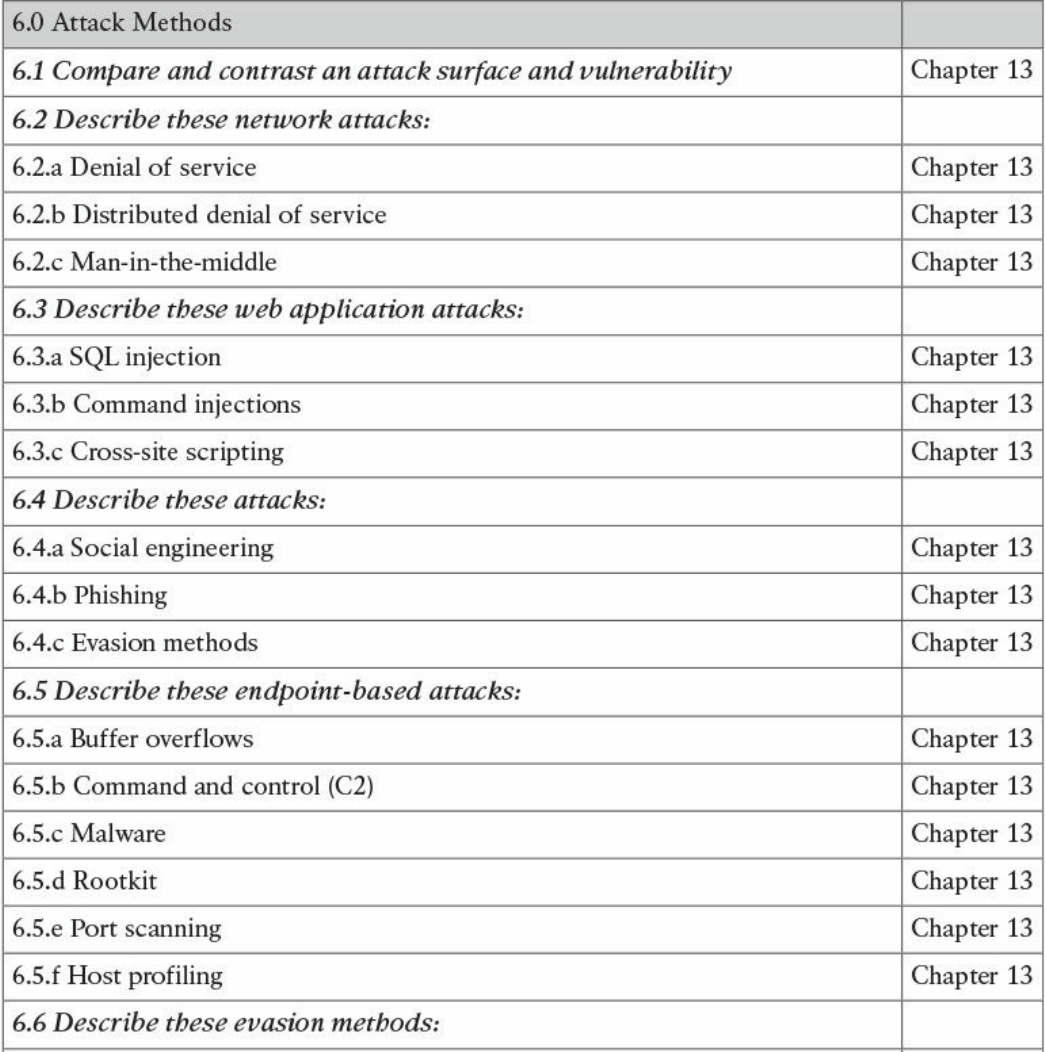

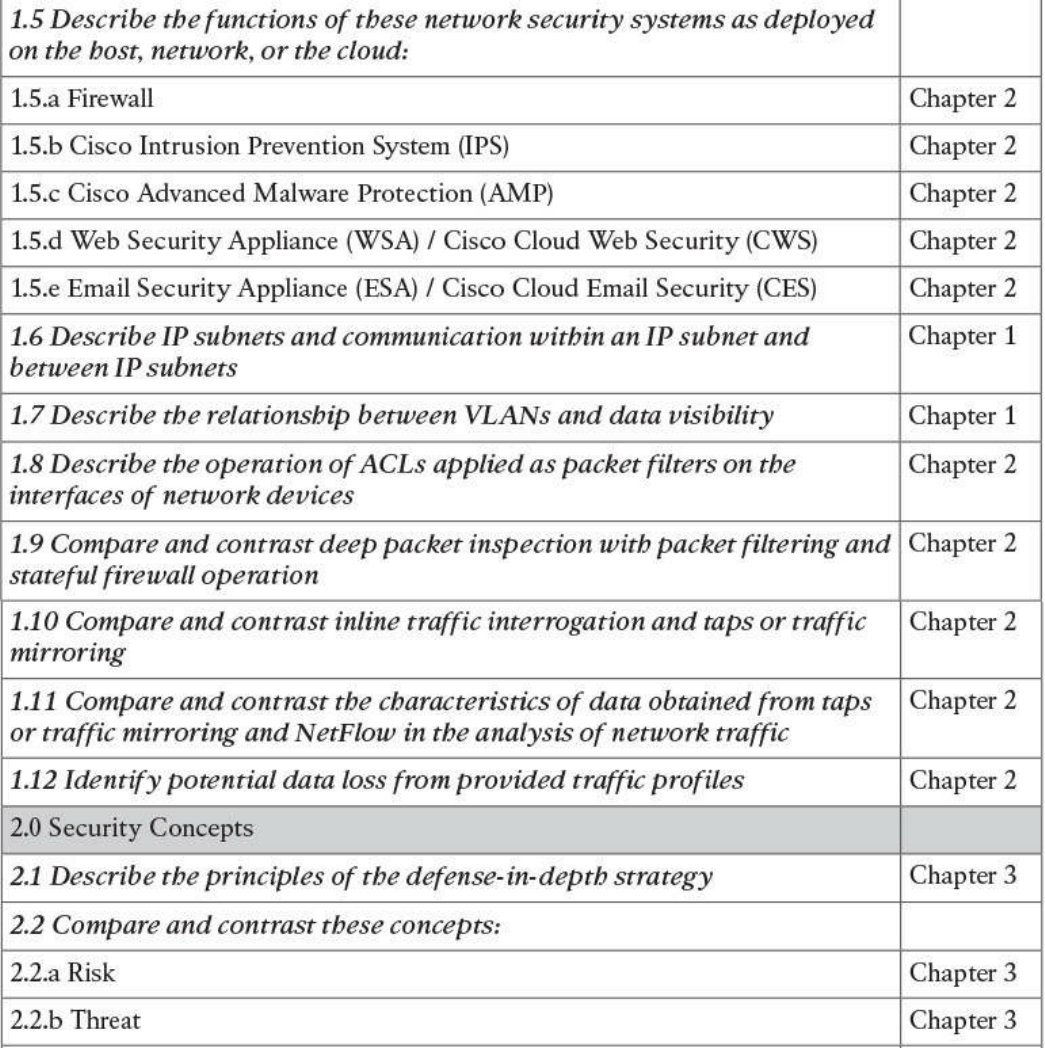

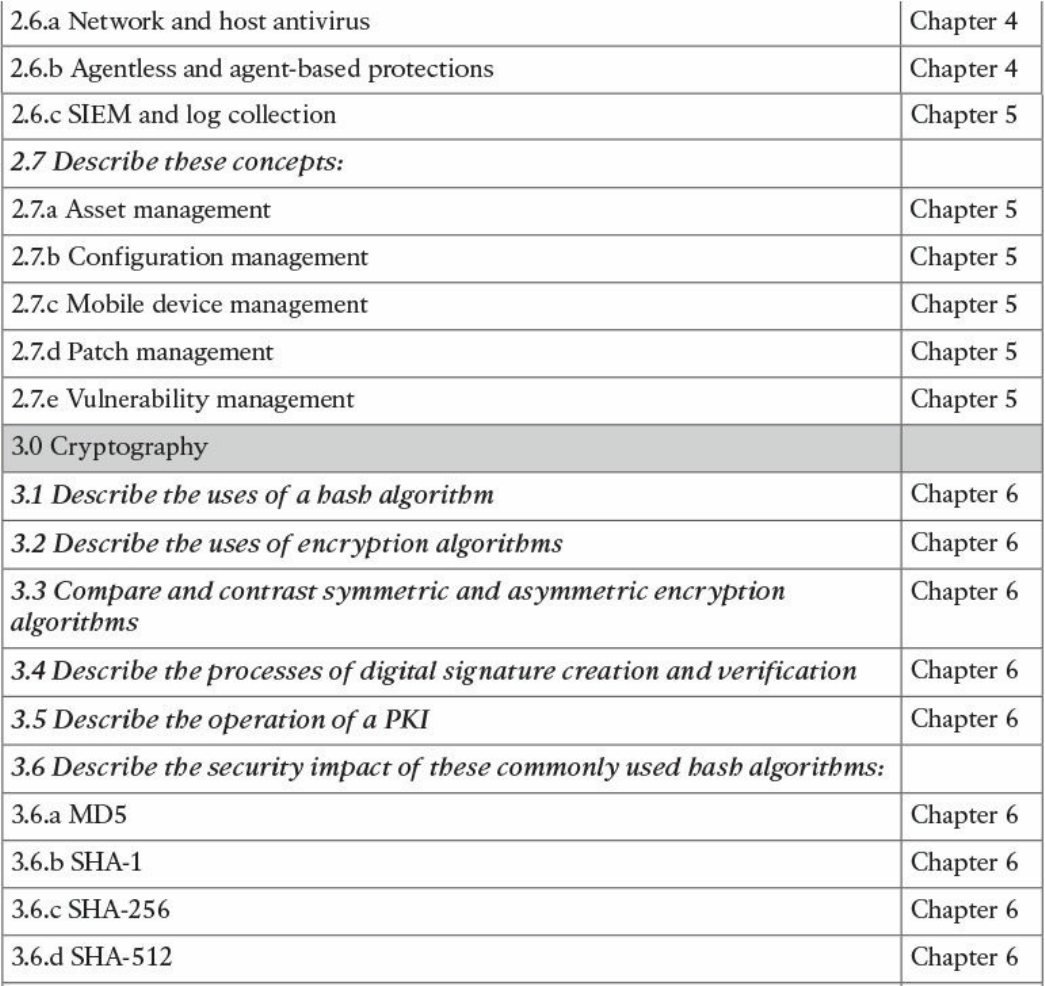

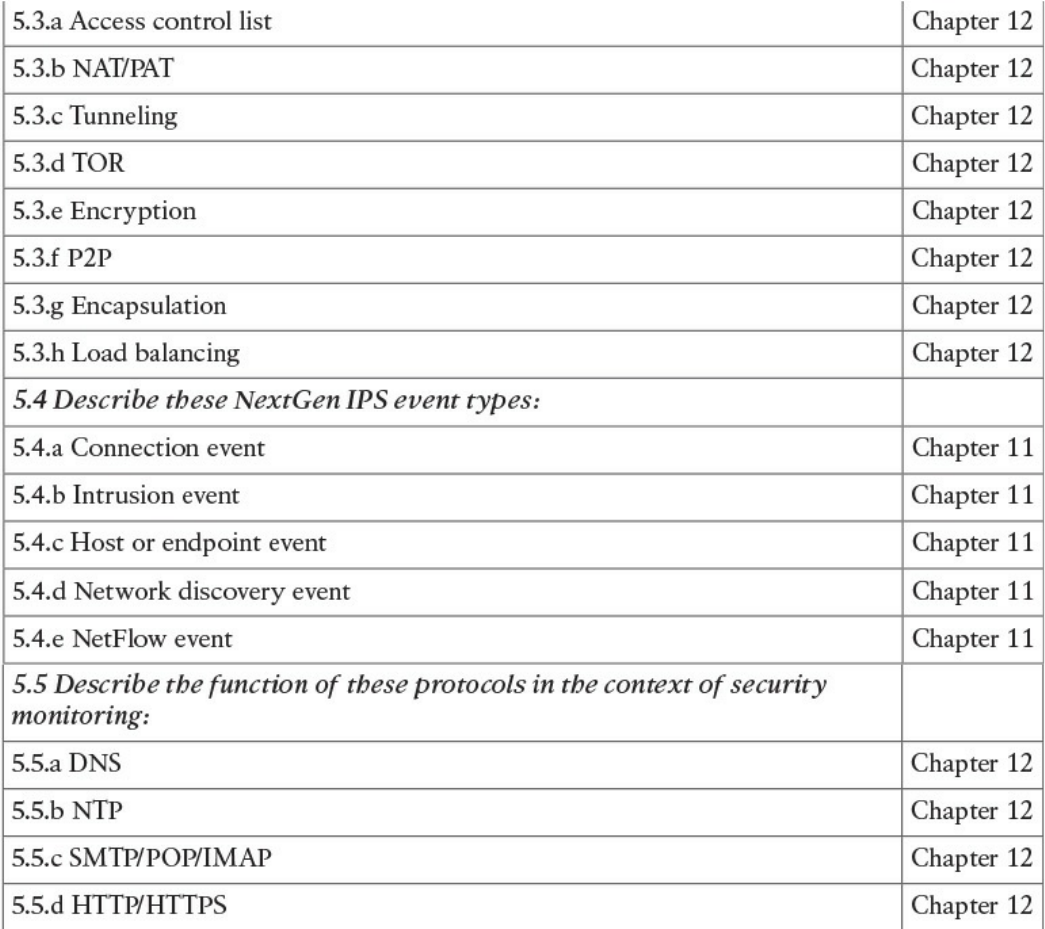

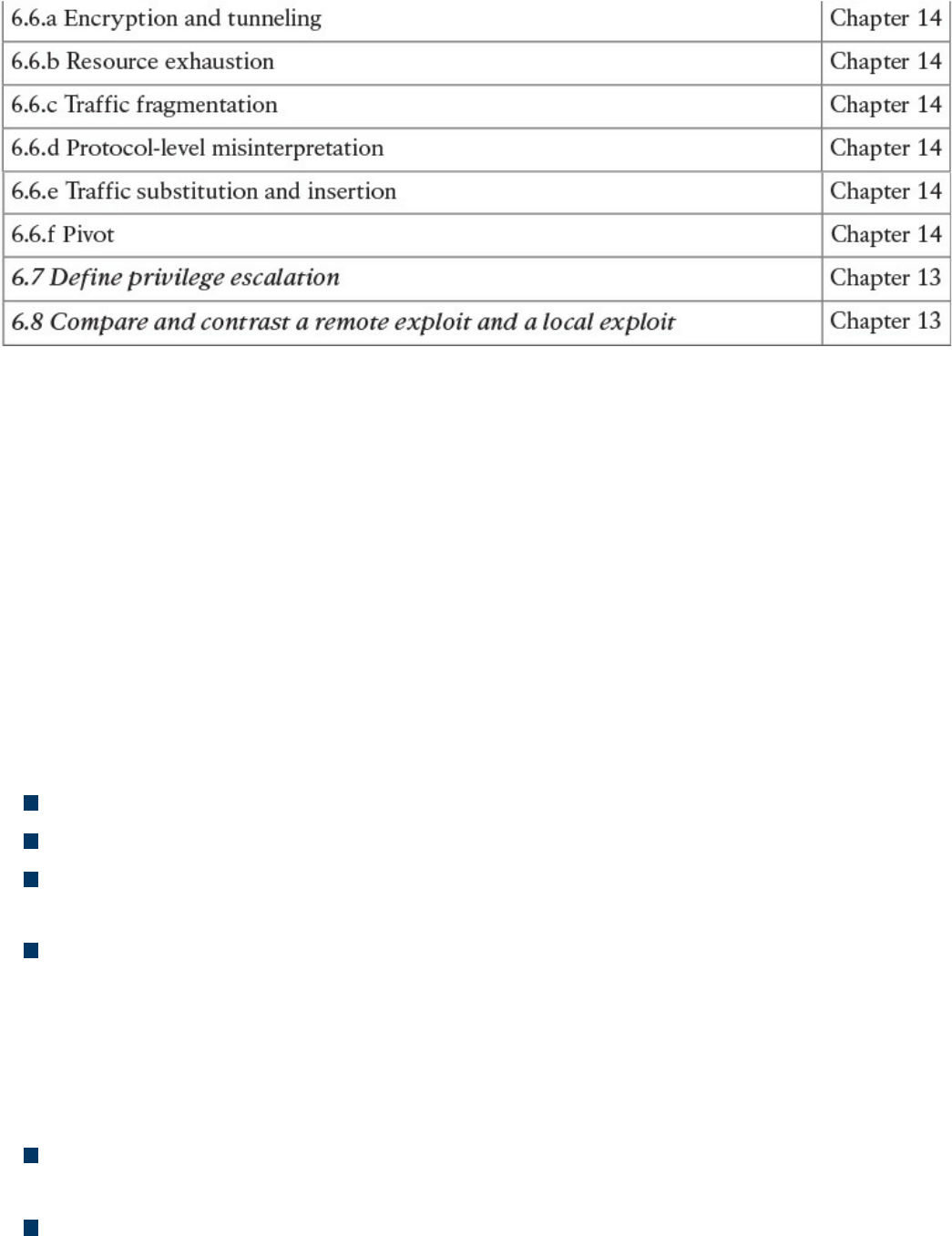

Table I-1 210-250 SECFND Exam Topics

About the CCNA Cyber Ops SECFND 210-250 Official Cert Guide

This book maps to the topic areas of the 210-250 SECFND exam and uses a number of

features to help you understand the topics and prepare for the exam.

Objectives and Methods

This book uses several key methodologies to help you discover the exam topics on

which you need more review, to help you fully understand and remember those details,

and to help you prove to yourself that you have retained your knowledge of those topics.

So, this book does not try to help you pass the exams only by memorization, but by truly

learning and understanding the topics. This book is designed to help you pass the

SECFND exam by using the following methods:

Helping you discover which exam topics you have not mastered

Providing explanations and information to fill in your knowledge gaps

Supplying exercises that enhance your ability to recall and deduce the answers to

test questions

Providing practice exercises on the topics and the testing process via test questions

on the companion website

Book Features

To help you customize your study time using this book, the core chapters have several

features that help you make the best use of your time:

“Do I Know This Already?” quiz: Each chapter begins with a quiz that helps you

determine how much time you need to spend studying that chapter.

Foundation Topics: These are the core sections of each chapter. They explain the

concepts for the topics in that chapter.

www.hellodigi.ir

Exam Preparation Tasks: After the “Foundation Topics” section of each chapter,

the “Exam Preparation Tasks” section lists a series of study activities that you

should do at the end of the chapter. Each chapter includes the activities that make

the most sense for studying the topics in that chapter:

Review All the Key Topics: The Key Topic icon appears next to the most

important items in the “Foundation Topics” section of the chapter. The “Review

All the Key Topics” activity lists the key topics from the chapter, along with

their page numbers. Although the contents of the entire chapter could be on the

exam, you should definitely know the information listed in each key topic, so you

should review these.

Complete the Tables and Lists from Memory: To help you memorize some

lists of facts, many of the more important lists and tables from the chapter are

included in a document on the companion website. This document lists only

partial information, allowing you to complete the table or list.

Define Key Terms: Although the exam is unlikely to ask you to define a term,

the CCNA Cyber Ops exams do require that you learn and know a lot of

networking terminology. This section lists the most important terms from the

chapter, asking you to write a short definition and compare your answer to the

glossary at the end of the book.

Q&A: Confirm that you understand the content you just covered.

Web-based practice exam: The companion website includes the Pearson Cert

Practice Test engine, which allows you to take practice exam questions. Use it to

prepare with a sample exam and to pinpoint topics where you need more study.

How This Book Is Organized

This book contains 14 core chapters—Chapters 1 through 14. Chapter 15 includes some

preparation tips and suggestions for how to approach the exam. Each core chapter

covers a subset of the topics on the CCNA Cyber Ops SECFND exam. The core

chapters are organized into parts. They cover the following topics:

Part I: Network Concepts

Chapter 1: Fundamentals of Networking Protocols and Networking Devices

covers the networking technology fundamentals such as the OSI model and different

protocols, including IP, TCP, UDP, ICMP, DNS, DHCP, ARP, and others. It also

covers the basic operations of network infrastructure devices such as routers,

switches, hubs, wireless access points, and wireless LAN controllers.

Chapter 2: Network Security Devices and Cloud Services covers the

fundamentals of firewalls, intrusion prevention systems (IPSs), Advance Malware

www.hellodigi.ir

Protection (AMP), and fundamentals of the Cisco Web Security Appliance (WSA),

Cisco Cloud Web Security (CWS), Cisco Email Security Appliance (ESA), and the

Cisco Cloud Email Security (CES) service. This chapter also describes the

operation of access control lists applied as packet filters on the interfaces of

network devices and compares and contrasts deep packet inspection with packet

filtering and stateful firewall operations. It provides details about inline traffic

interrogation and taps or traffic mirroring. This chapter compares and contrasts the

characteristics of data obtained from taps or traffic mirroring and NetFlow in the

analysis of network traffic.

Part II: Security Concepts

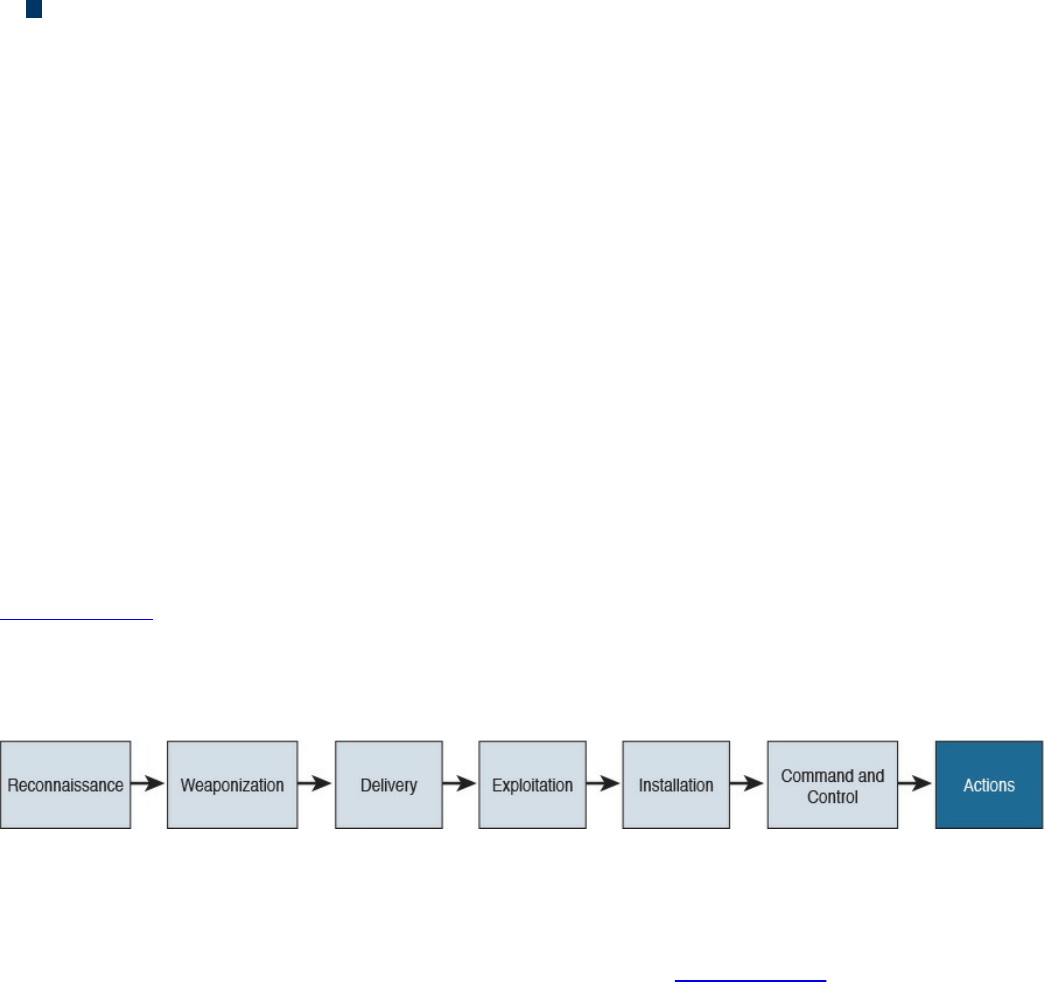

Chapter 3: Security Principles covers the principles of the defense-in-depth

strategy and compares and contrasts the concepts of risks, threats, vulnerabilities,

and exploits. This chapter also defines threat actor, runbook automation (RBA),

chain of custody (evidentiary), reverse engineering, sliding window anomaly

detection, personally identifiable information (PII), protected health information

(PHI), as well as the principle of least privilege and how to perform separation of

duties. It also covers the concepts of risk scoring, risk weighting, risk reduction,

and how to perform overall risk assessments.

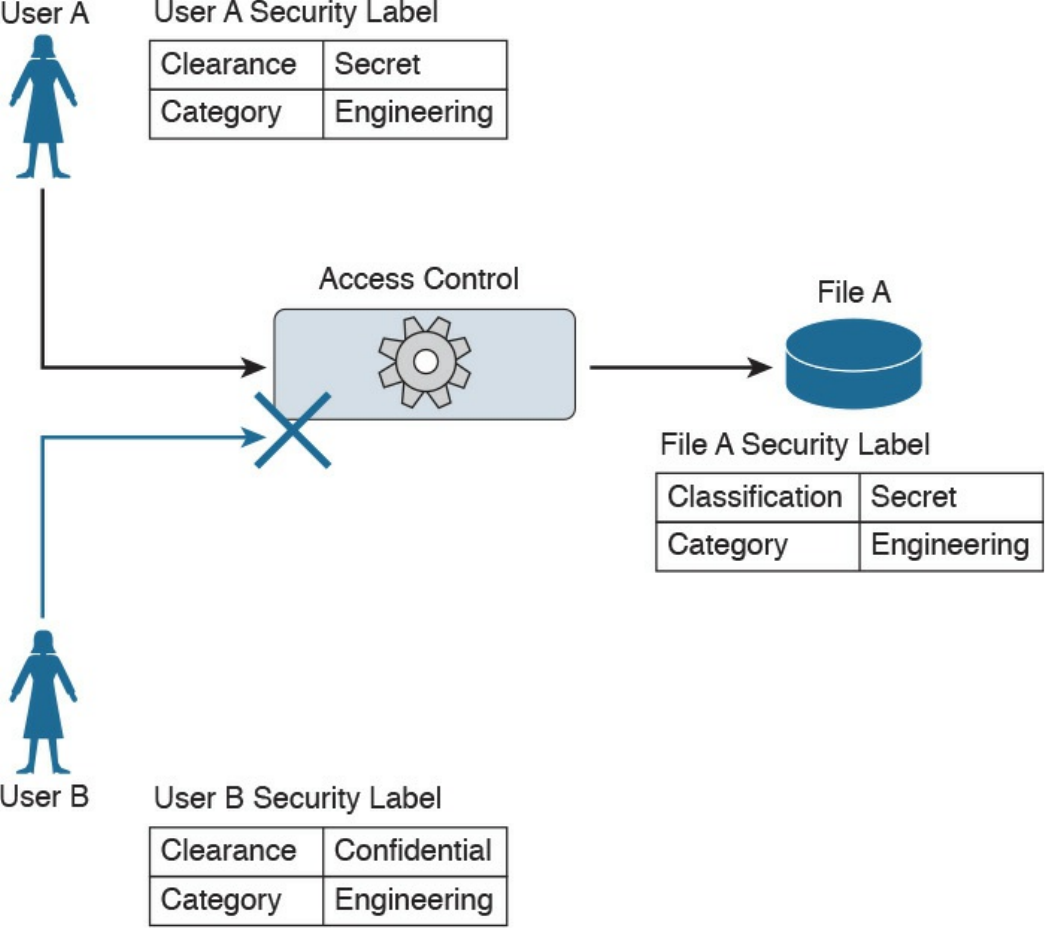

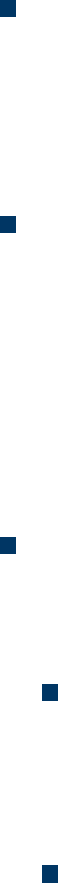

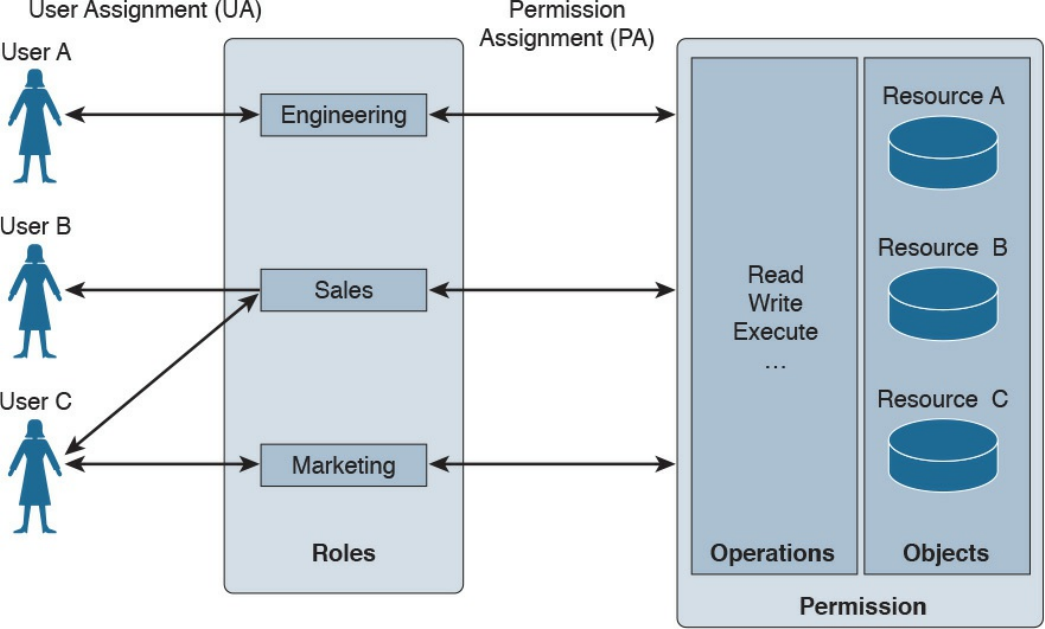

Chapter 4: Introduction to Access Controls covers the foundation of access

control and management. It provides an overview of authentication, authorization,

and accounting principles, and introduces some of the most used access control

models, including discretionary access control (DAC), mandatory access control

(MAC), role-based access control (RBAC), and attribute-based access control

(ABAC). Also, this chapter covers the actual implementation of access control,

such as AAA protocols, port security, 802.1x, Cisco TrustSec, intrusion prevention

and detection, and antimalware.

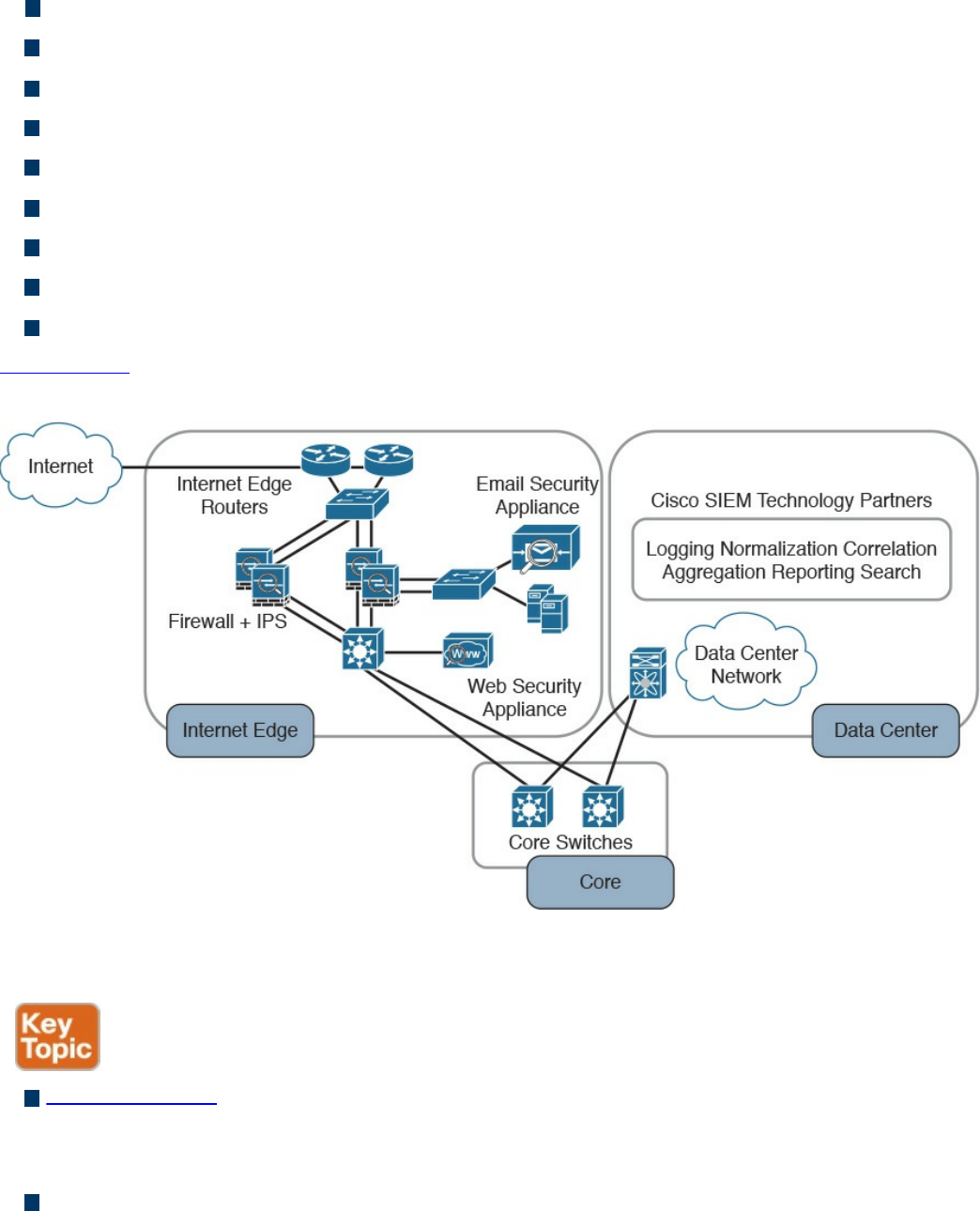

Chapter 5: Introduction to Security Operations Management covers the

foundation of security operations management. Specifically, it provides an

overview of identity management, protocol and technologies, asset security

management, change and configuration management, mobile device management,

event and logging management, including Security Information and Event

Management (SIEM) technologies, vulnerability management, and patch

management.

Part III: Cryptography

Chapter 6: Fundamentals of Cryptography and Public Key Infrastructure (PKI)

covers the different hashing and encryption algorithms in the industry. It provides a

comparison of symmetric and asymmetric encryption algorithms and an introduction

of public key infrastructure (PKI), the operations of a PKI, and an overview of the

www.hellodigi.ir

IPsec, SSL, and TLS protocols.

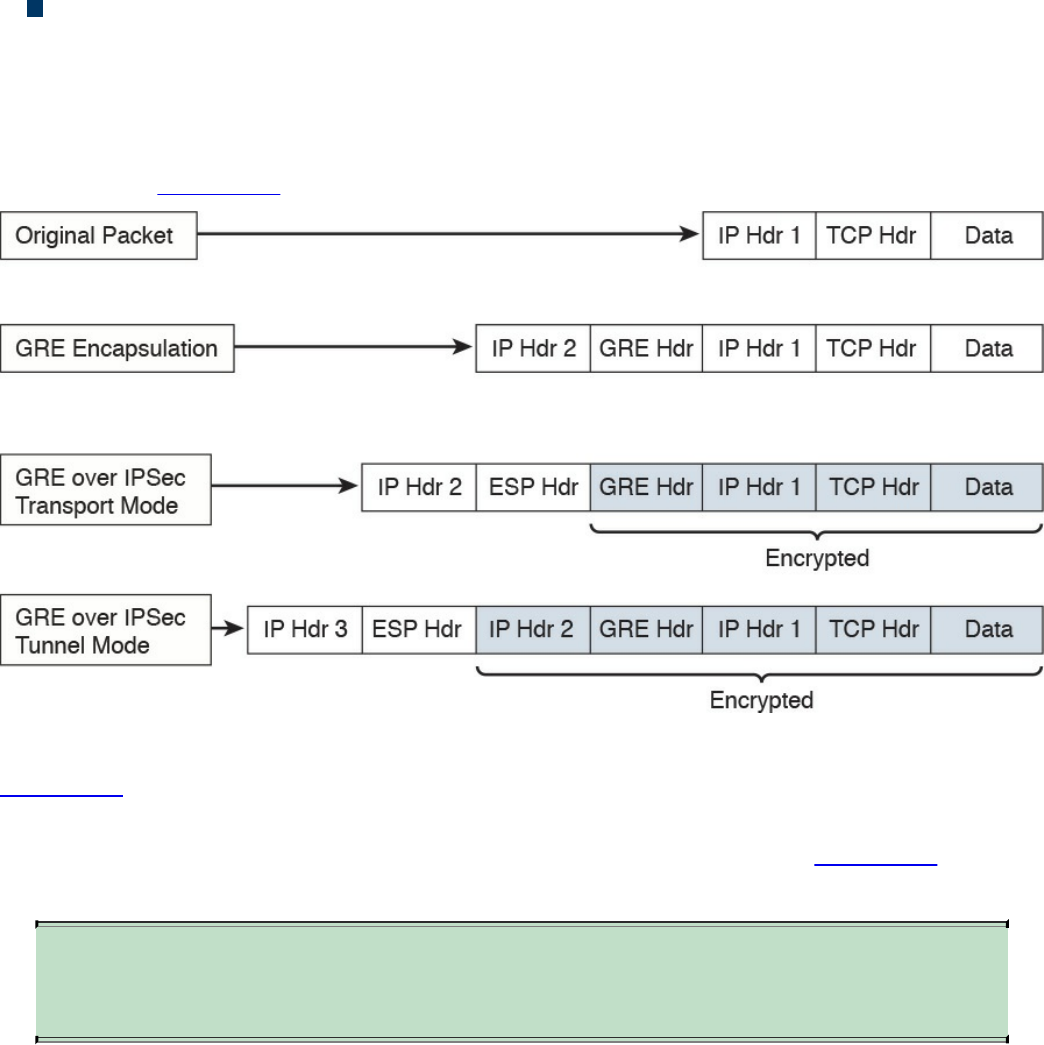

Chapter 7: Introduction to Virtual Private Networks (VPNs) provides an

introduction to remote access and site-to-site VPNs, different deployment

scenarios, and the VPN solutions provided by Cisco.

Part IV: Host-based Analysis

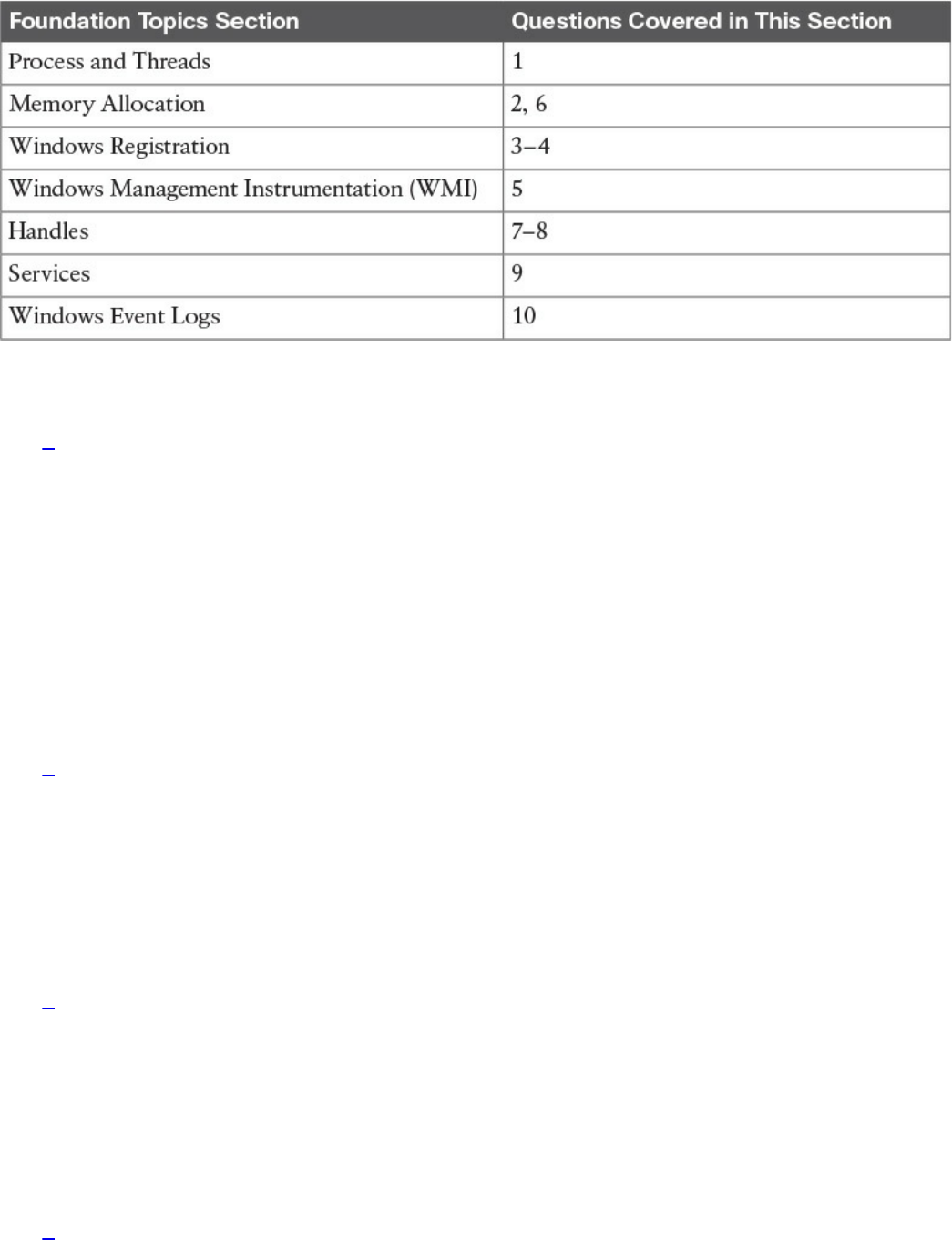

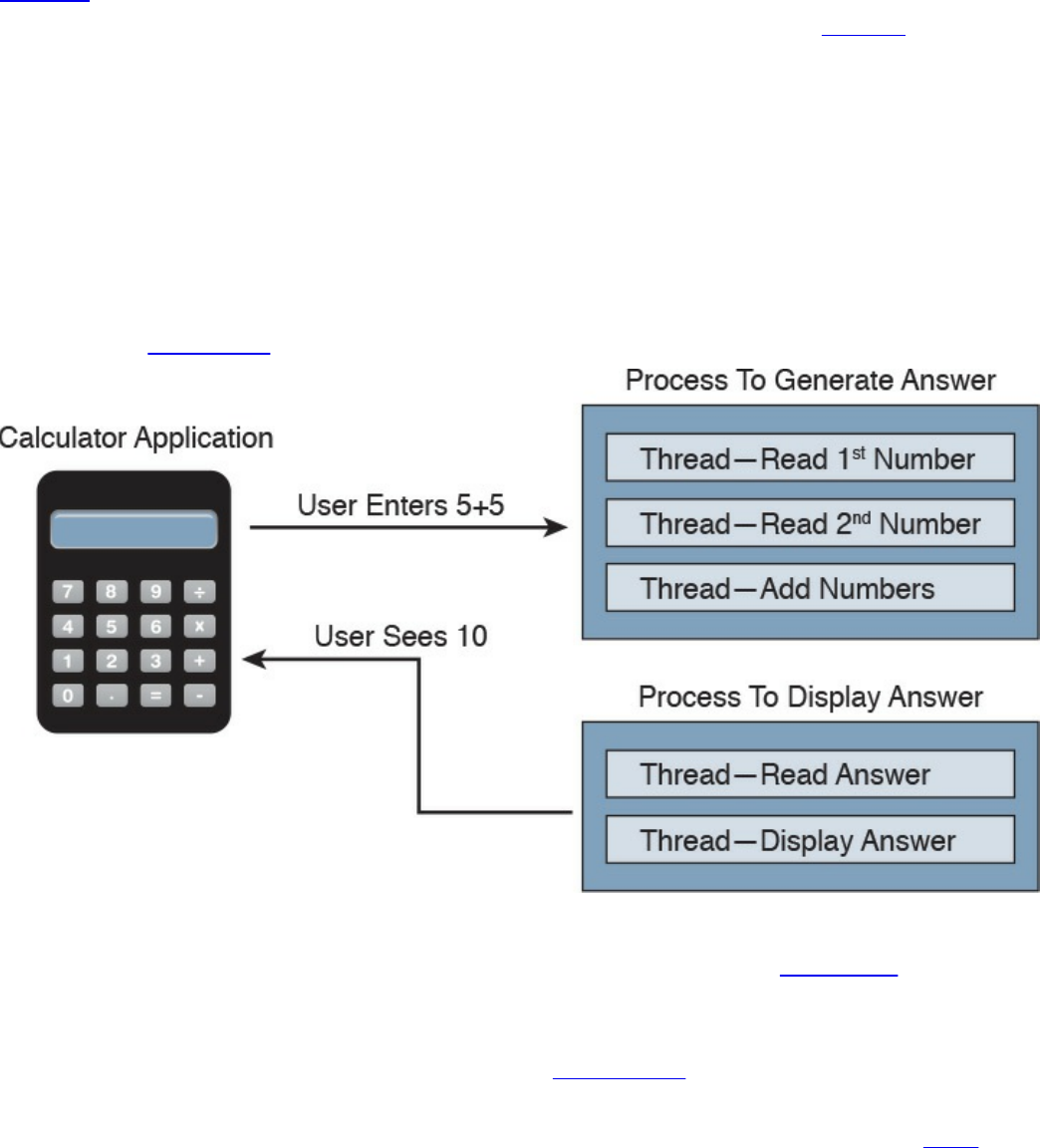

Chapter 8: Windows-Based Analysis covers the basics of how a system running

Windows handles applications. This includes details about how memory is used as

well as how resources are processed by the operating system. These skills are

essential for maximizing performance and securing a Windows system.

Chapter 9: Linux- and Mac OS X–Based Analysis covers how things work inside

a UNIX environment. This includes process execution and event logging. Learning

how the environment functions will not only improve your technical skills but can

also be used to build a strategy for securing these systems.

Chapter 10: Endpoint Security Technologies covers the functionality of endpoint

security technologies, including host-based intrusion detection, host-based

firewalls, application-level whitelisting and blacklisting, as well as systems-based

sandboxing.

Part V: Security Monitoring and Attack Methods

Chapter 11: Network and Host Telemetry covers the different types of data

provided by network and host-based telemetry technologies, including NetFlow,

traditional and next-generation firewalls, packet captures, application visibility and

control, and web and email content filtering. It also provides an overview of how

full packet captures, session data, transaction logs, and security alert data are used

in security operations and security monitoring.

Chapter 12: Security Monitoring Operational Challenges covers the different

operational challenges, including Tor, access control lists, tunneling, peer-to-peer

(P2P) communication, encapsulation, load balancing, and other technologies.

Chapter 13: Types of Attacks and Vulnerabilities covers the different types of

cyber security attacks and vulnerabilities and how they are carried out by threat

actors nowadays.

Chapter 14: Security Evasion Techniques covers how attackers obtain stealth as

well as the tricks used to negatively impact detection and forensic technologies.

Topics include encryption, exhausting resources, fragmenting traffic, manipulating

protocols, and pivoting within a compromised environment.

Part VI: Final Preparation

Chapter 15: Final Preparation identifies the tools for final exam preparation and

helps you develop an effective study plan. It contains tips on how to best use the

www.hellodigi.ir

web-based material to study.

Part VII: Appendixes

Appendix A: Answers to the “Do I Know This Already?” Quizzes and Q&A

Questions includes the answers to all the questions from Chapters 1 through 14.

Appendix B: Memory Tables (a website-only appendix) contains the key tables

and lists from each chapter, with some of the contents removed. You can print this

appendix and, as a memory exercise, complete the tables and lists. The goal is to

help you memorize facts that can be useful on the exam. This appendix is available

in PDF format at the book website; it is not in the printed book.

Appendix C: Memory Tables Answer Key (a website-only appendix) contains the

answer key for the memory tables in Appendix B. This appendix is available in

PDF format at the book website; it is not in the printed book.

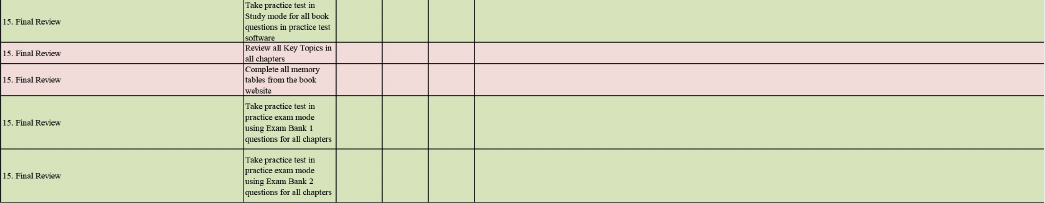

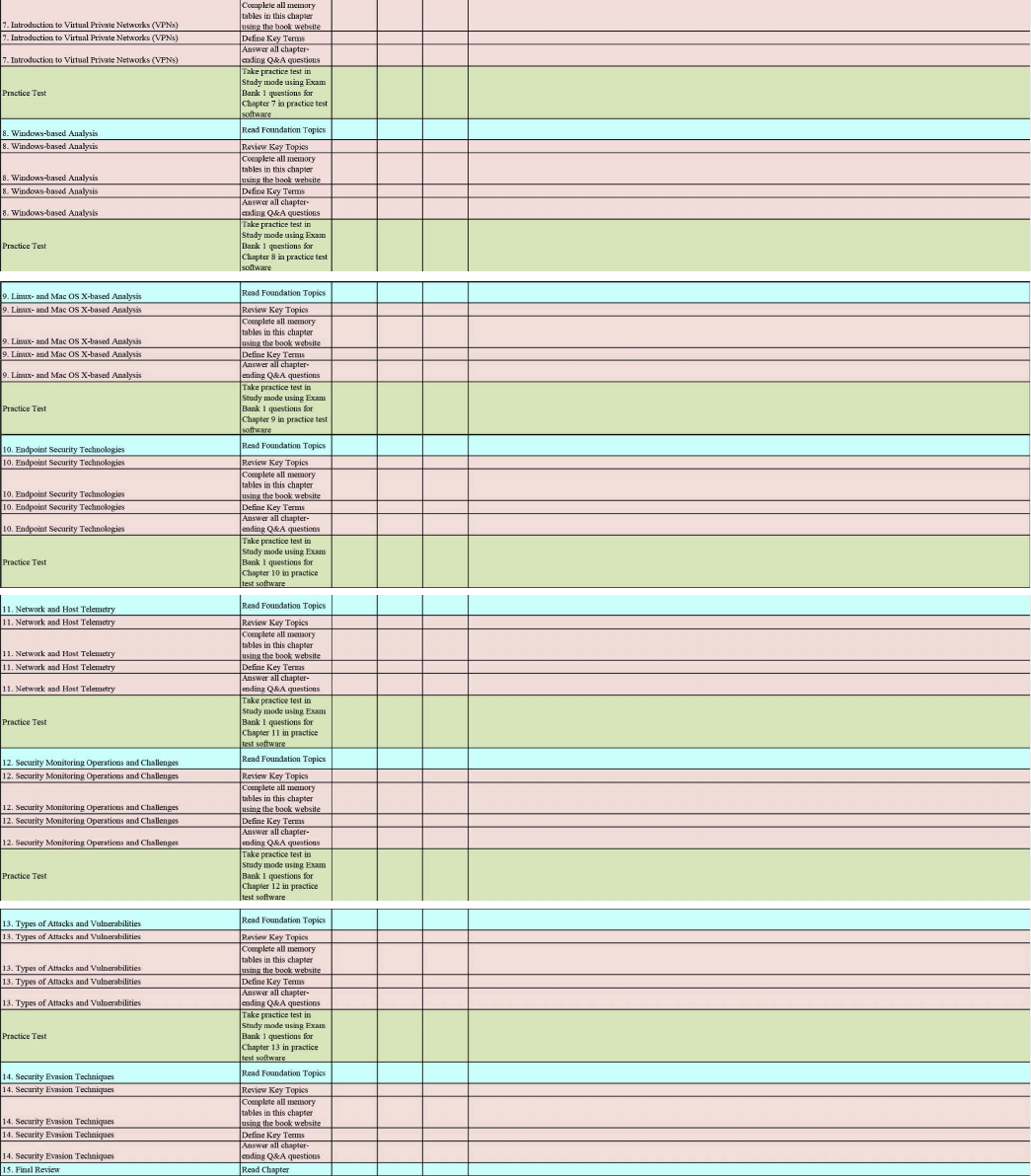

Appendix D: Study Planner is a spreadsheet, available from the book website,

with major study milestones, where you can track your progress throughout your

study.

Companion Website

Register this book to get access to the Pearson Test Prep practice test software and other

study materials, plus additional bonus content. Check this site regularly for new and

updated postings written by the authors that provide further insight into the more

troublesome topics on the exam. Be sure to check the box that you would like to hear

from us to receive updates and exclusive discounts on future editions of this product or

related products.

To access this companion website, follow these steps:

1. Go to www.pearsonITcertification.com/register and log in or create a new

account.

2. Enter the ISBN 9781587147029.

3. Answer the challenge question as proof of purchase.

4. Click the “Access Bonus Content” link in the Registered Products section of your

account page, to be taken to the page where your downloadable content is

available.

Please note that many of our companion content files can be very large, especially

image and video files.

If you are unable to locate the files for this title by following the steps, please visit

www.pearsonITcertification.com/contact and select the “Site Problems/Comments”

option. Our customer service representatives will assist you.

www.hellodigi.ir

Pearson Test Prep Practice Test Software

As noted previously, this book comes complete with the Pearson Test Prep practice test

software containing two full exams. These practice tests are available to you either

online or as an offline Windows application. To access the practice exams that were

developed with this book, please see the instructions in the card inserted in the sleeve in

the back of the book. This card includes a unique access code that enables you to

activate your exams in the Pearson Test Prep software.

Accessing the Pearson Test Prep Software Online

The online version of this software can be used on any device with a browser and

connectivity to the Internet, including desktop machines, tablets, and smartphones. To

start using your practice exams online, simply follow these steps:

1. Go to http://www.PearsonTestPrep.com.

2. Select Pearson IT Certification as your product group.

3. Enter your email/password for your account. If you don’t have an account on

PearsonITCertification.com or CiscoPress.com, you will need to establish one by

going to PearsonITCertification.com/join.

4. In the My Products tab, click the Activate New Product button.

5. Enter the access code printed on the insert card in the back of your book to

activate your product.

6. The product will now be listed in your My Products page. Click the Exams

button to launch the exam settings screen and start your exam.

Accessing the Pearson Test Prep Software Offline

If you wish to study offline, you can download and install the Windows version of the

Pearson Test Prep software. There is a download link for this software on the book’s

companion website, or you can just enter the following link in your browser:

http://www.pearsonitcertification.com/content/downloads/pcpt/engine.zip

To access the book’s companion website and the software, simply follow these steps:

1. Register your book by going to PearsonITCertification.com/register and entering

the ISBN 9781587147029.

2. Respond to the challenge questions.

3. Go to your account page and select the Registered Products tab.

4. Click the Access Bonus Content link under the product listing.

5. Click the Install Pearson Test Prep Desktop Version link under the Practice

www.hellodigi.ir

Exams section of the page to download the software.

6. Once the software finishes downloading, unzip all the files on your computer.

7. Double-click the application file to start the installation, and follow the onscreen

instructions to complete the registration.

8. Once the installation is complete, launch the application and select Activate

Exam button on the My Products tab.

9. Click the Activate a Product button in the Activate Product Wizard.

10. Enter the unique access code found on the card in the sleeve in the back of your

book and click the Activate button.

11. Click Next and then the Finish button to download the exam data to your

application.

12. You can now start using the practice exams by selecting the product and clicking

the Open Exam button to open the exam settings screen.

Note that the offline and online versions will synch together, so saved exams and grade

results recorded on one version will be available to you on the other as well.

Customizing Your Exams

Once you are in the exam settings screen, you can choose to take exams in one of three

modes:

Study mode

Practice Exam mode

Flash Card mode

Study mode allows you to fully customize your exams and review answers as you are

taking the exam. This is typically the mode you would use first to assess your

knowledge and identify information gaps. Practice Exam mode locks certain

customization options, as it is presenting a realistic exam experience. Use this mode

when you are preparing to test your exam readiness. Flash Card mode strips out the

answers and presents you with only the question stem. This mode is great for late-stage

preparation when you really want to challenge yourself to provide answers without the

benefit of seeing multiple-choice options. This mode will not provide the detailed score

reports that the other two modes will, so it should not be used if you are trying to

identify knowledge gaps.

In addition to these three modes, you will be able to select the source of your questions.

You can choose to take exams that cover all of the chapters or you can narrow your

selection to just a single chapter or the chapters that make up a specific part in the book.

All chapters are selected by default. If you want to narrow your focus to individual

www.hellodigi.ir

chapters, simply deselect all the chapters then select only those on which you wish to

focus in the Objectives area.

You can also select the exam banks on which to focus. Each exam bank comes complete

with a full exam of questions that cover topics in every chapter. The two exams printed

in the book are available to you as well as two additional exams of unique questions.

You can have the test engine serve up exams from all four banks or just from one

individual bank by selecting the desired banks in the exam bank area.

There are several other customizations you can make to your exam from the exam

settings screen, such as the time of the exam, the number of questions served up, whether

to randomize questions and answers, whether to show the number of correct answers for

multiple-answer questions, and whether to serve up only specific types of questions.

You can also create custom test banks by selecting only questions that you have marked

or questions on which you have added notes.

Updating Your Exams

If you are using the online version of the Pearson Test Prep software, you should always

have access to the latest version of the software as well as the exam data. If you are

using the Windows desktop version, every time you launch the software, it will check to

see if there are any updates to your exam data and automatically download any changes

that were made since the last time you used the software. This requires that you are

connected to the Internet at the time you launch the software.

Sometimes, due to many factors, the exam data may not fully download when you

activate your exam. If you find that figures or exhibits are missing, you may need to

manually update your exam.

To update a particular exam you have already activated and downloaded, simply select

the Tools tab and select the Update Products button. Again, this is only an issue with

the desktop Windows application.

If you wish to check for updates to the Pearson Test Prep software, Windows desktop

version, simply select the Tools tab and select the Update Application button. This will

ensure you are running the latest version of the software engine.

www.hellodigi.ir

Part I: Network Concepts

www.hellodigi.ir

Chapter 1. Fundamentals of Networking Protocols and

Networking Devices

This chapter covers the following topics:

Introduction to TCP/IP and OSI models

Wired LAN and Ethernet

Frame switching

Hub, switch, and router

Wireless LAN and technologies

Wireless LAN controller and access point

IPv4 and IPv6 addressing

IP routing

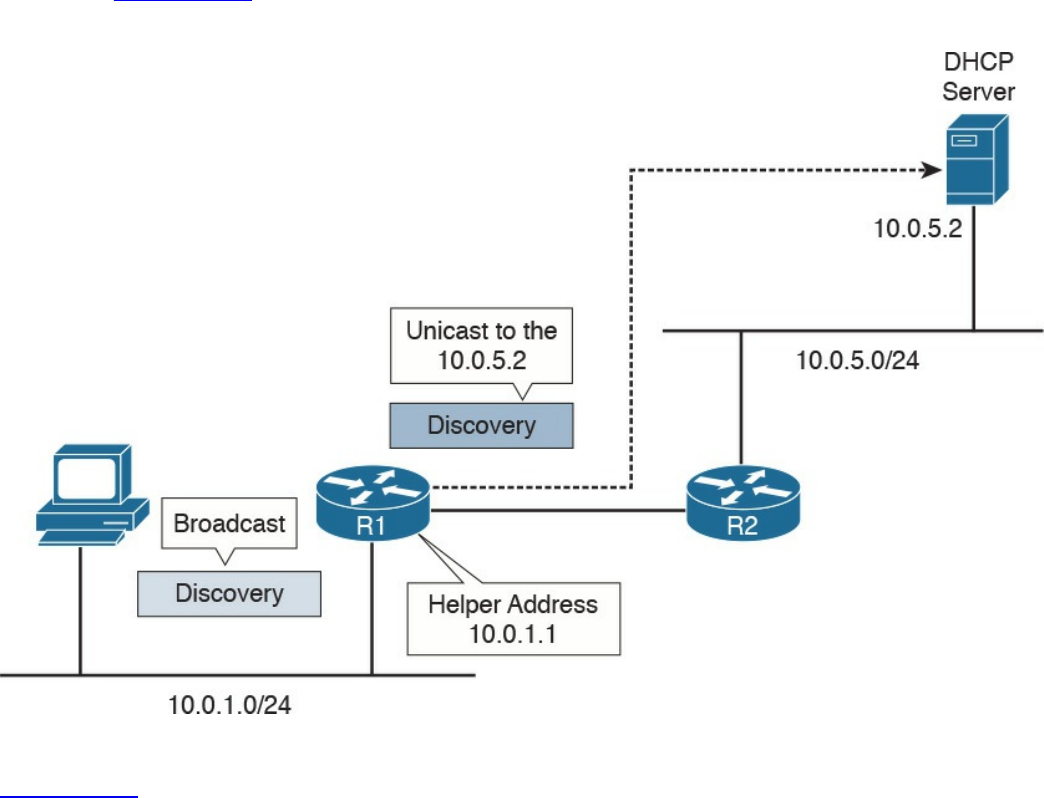

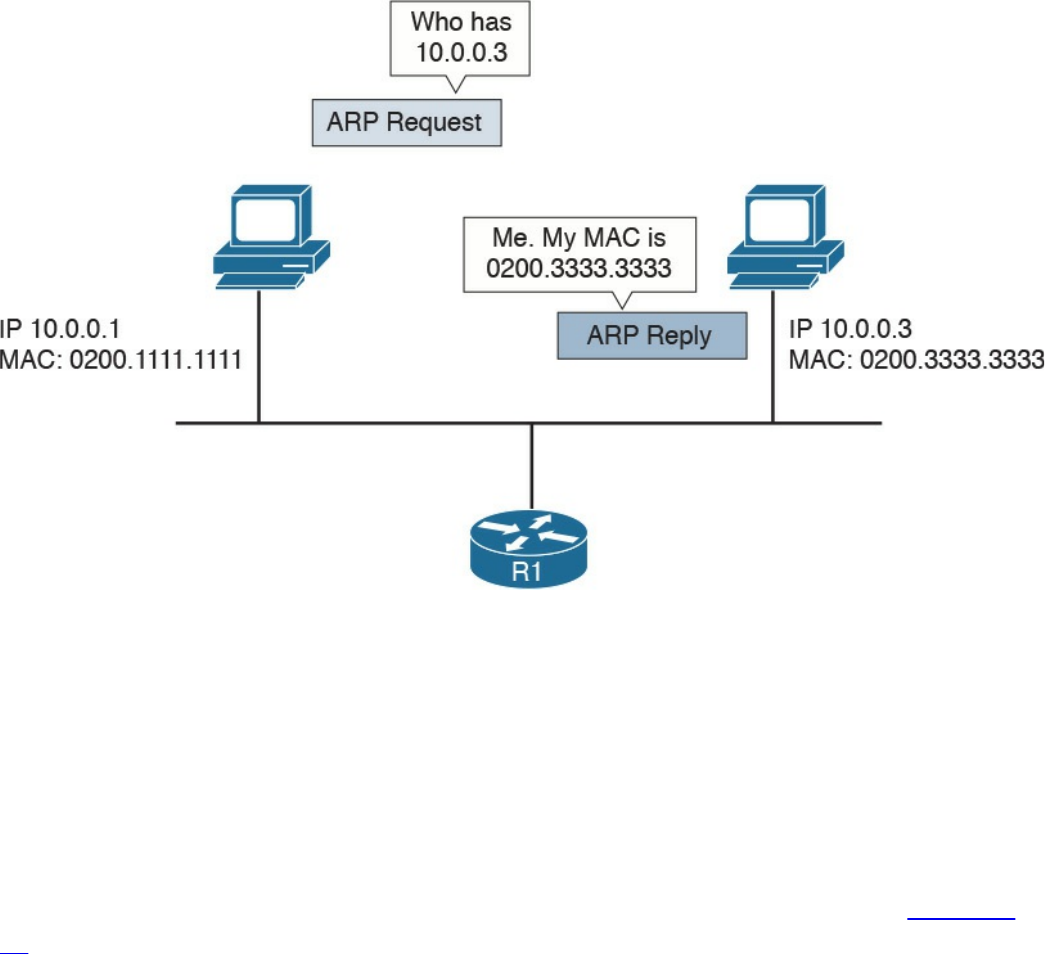

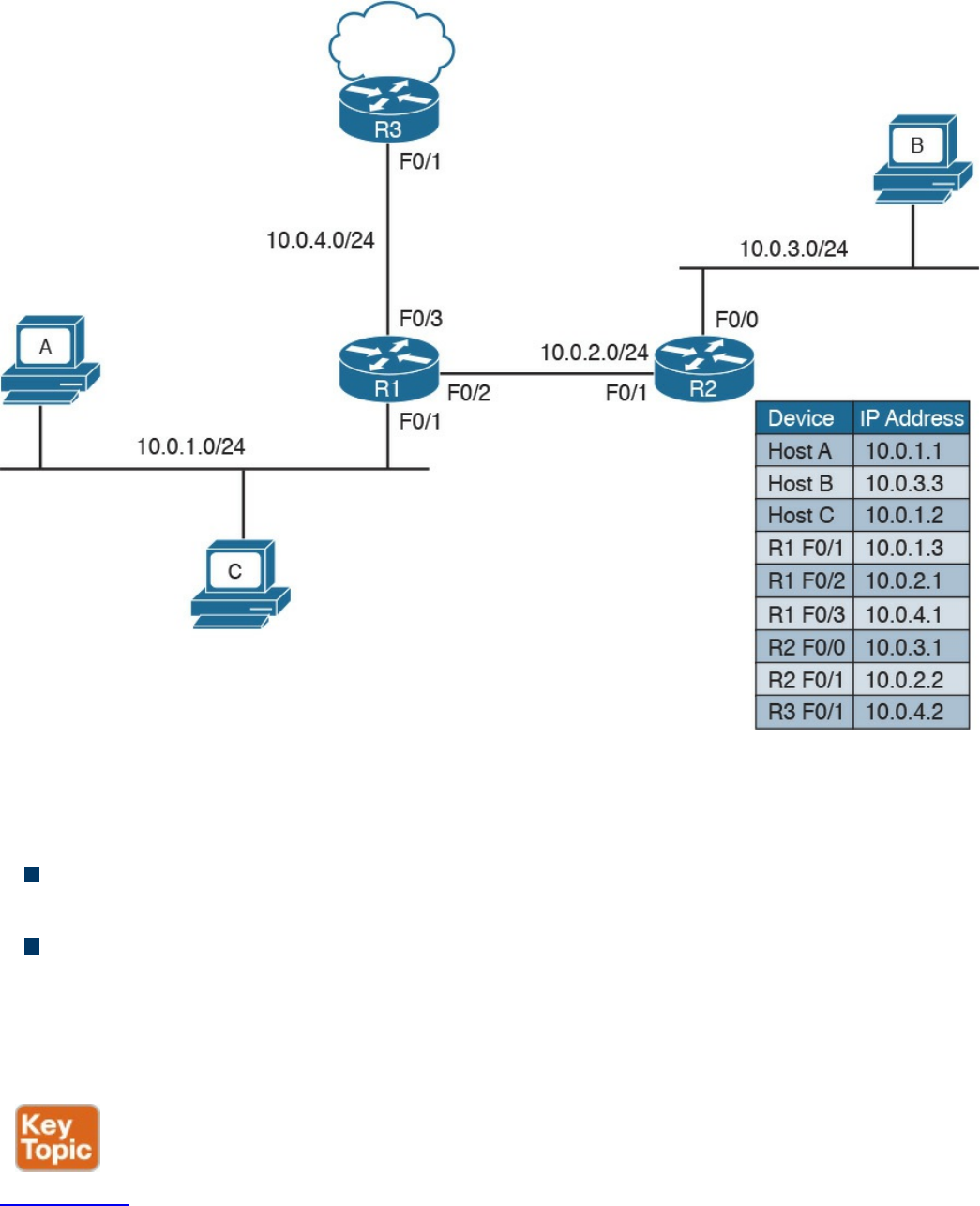

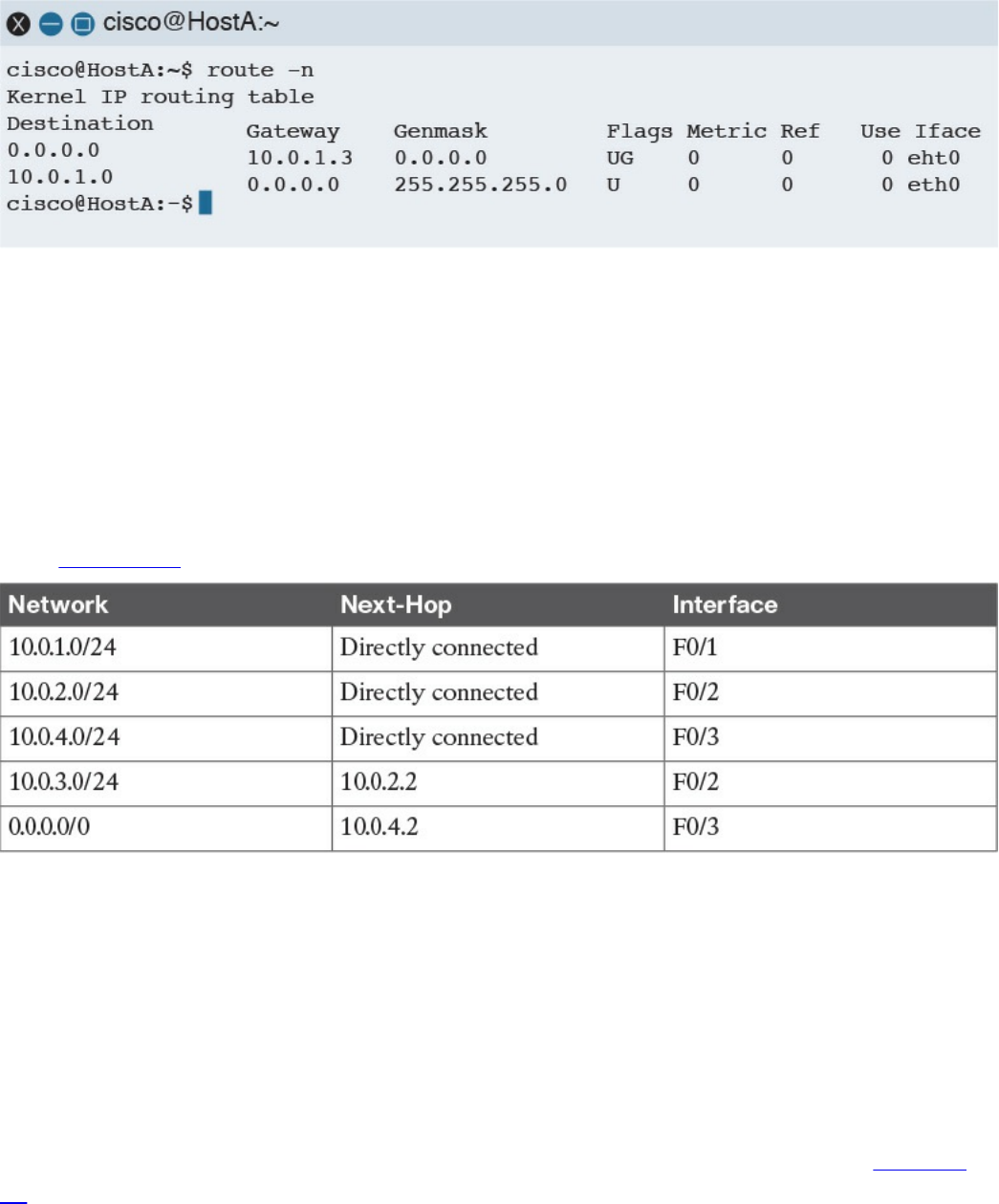

ARP, DHCP, ICMP, and DNS

Transport layer protocols

Welcome to the first chapter of the CCNA Cyber Ops SECFND #210-250 Official Cert

Guide. In this chapter, we go through the fundamentals of networking protocols and

explore how devices such as switches and routers work to allow two hosts to

communicate with each other, even if they are separated by many miles.

If you are already familiar with these topics—for example, if you already have a CCNA

Routing and Switching certification—this chapter will serve as a refresher on protocols

and device operations. If, on the other hand, you are approaching these topics for the

first time, you’ll learn about the fundamental protocols and devices at the base of

Internet communication and how they work.

This chapter begins with an introduction to the TCP/IP and OSI models and then

explores link layer technologies and protocols—specifically the Ethernet and Wireless

LAN technologies. We then discuss how the Internet Protocol (IP) works and how a

router uses IP to move packets from one site to another. Finally, we look into the two

most used transport layer protocols: Transmission Control Protocol (TCP) and User

Datagram Protocol (UDP).

www.hellodigi.ir

“Do I Know This Already?” Quiz

The “Do I Know This Already?” quiz helps you identify your strengths and deficiencies

in this chapter’s topics. The 13-question quiz, derived from the major sections in the

“Foundation Topics” portion of the chapter, helps you determine how to spend your

limited study time. You can find the answers in Appendix A Answers to the “Do I Know

This Already?” Quizzes and Q&A Questions.

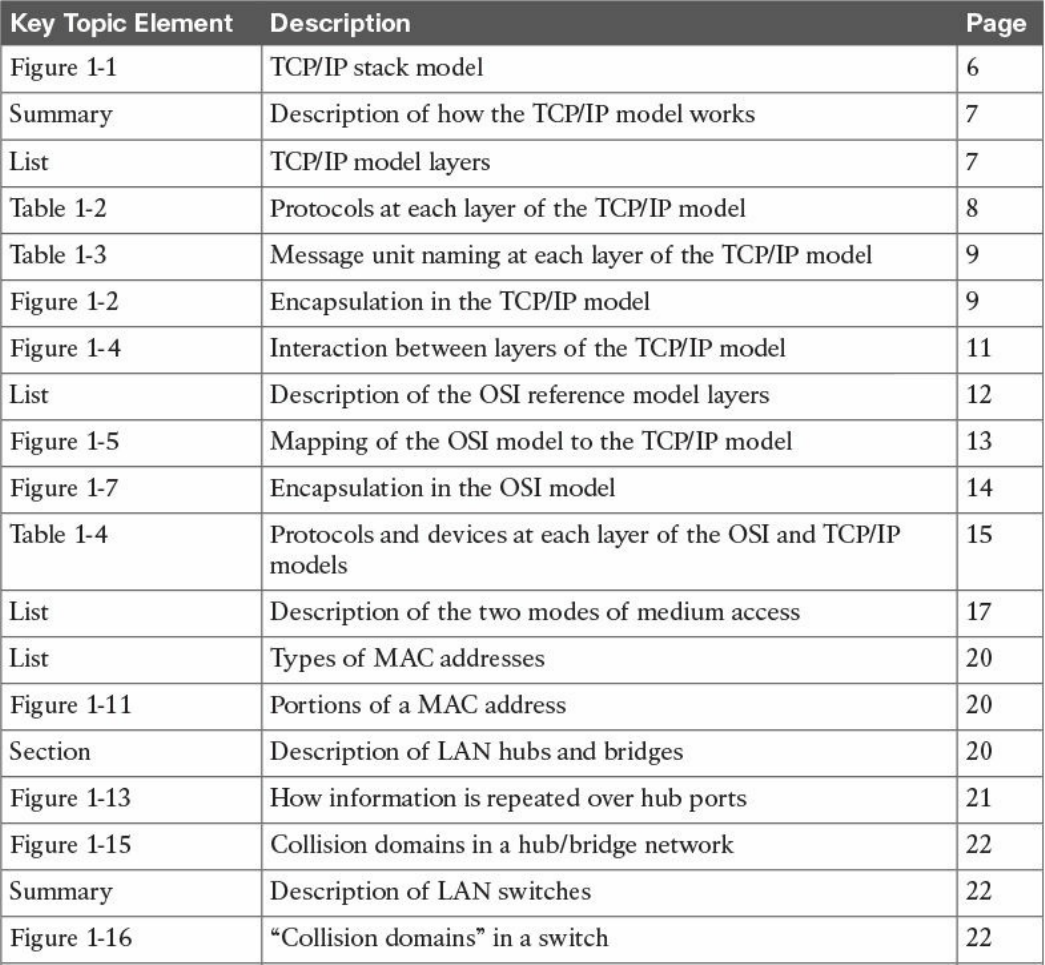

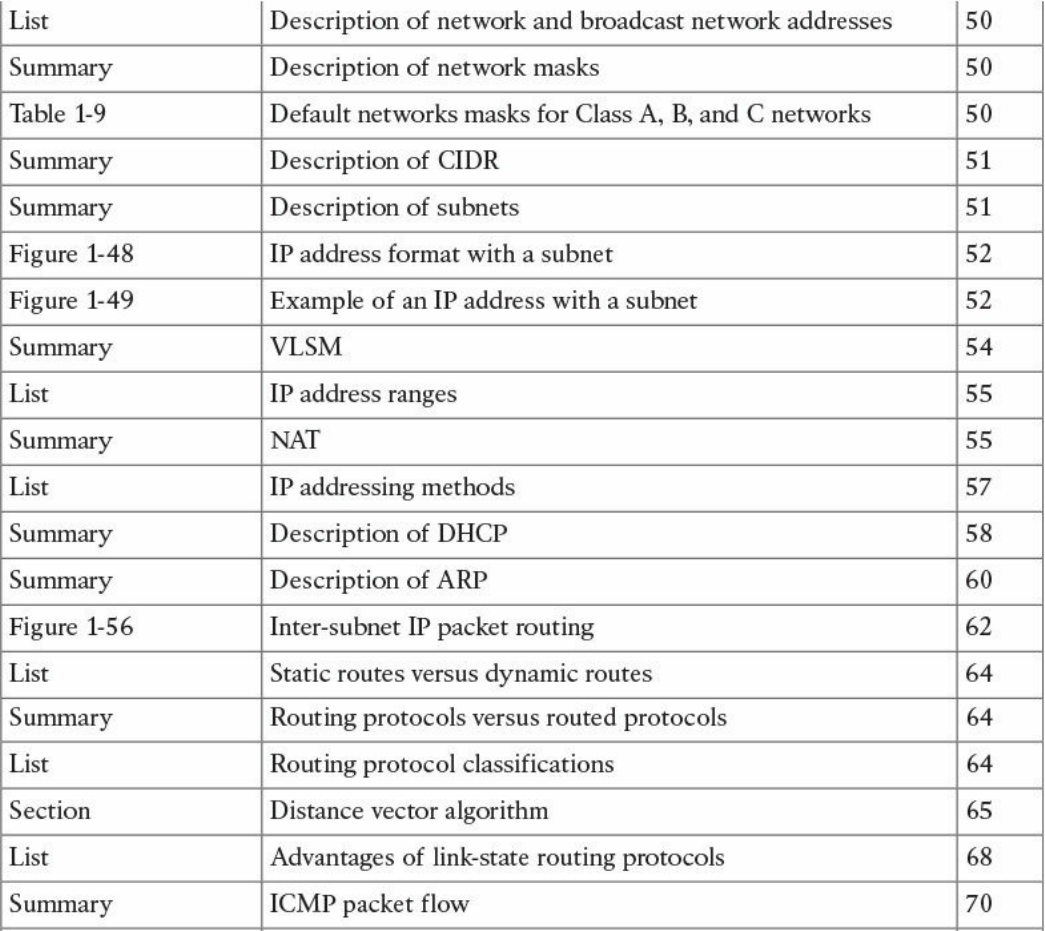

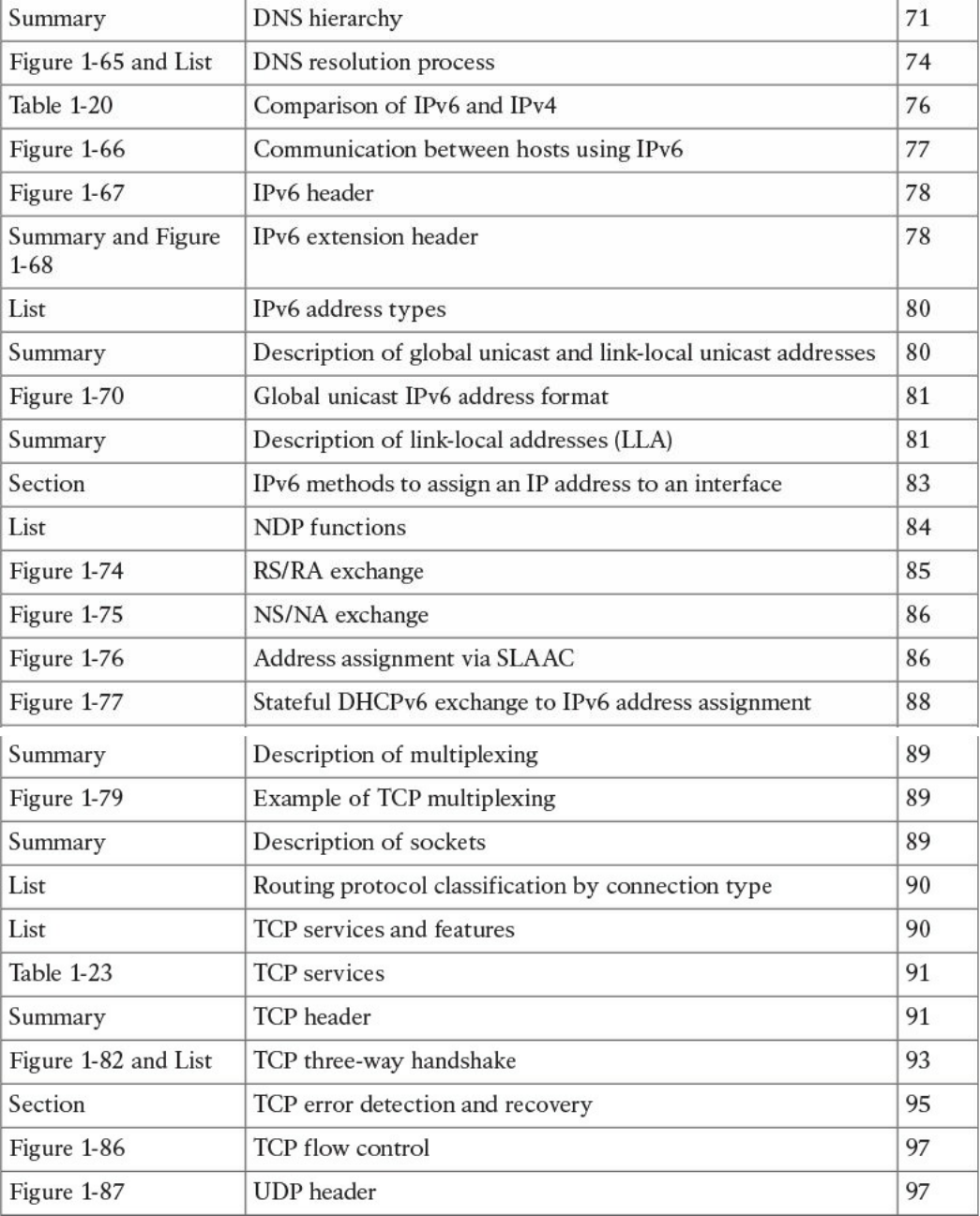

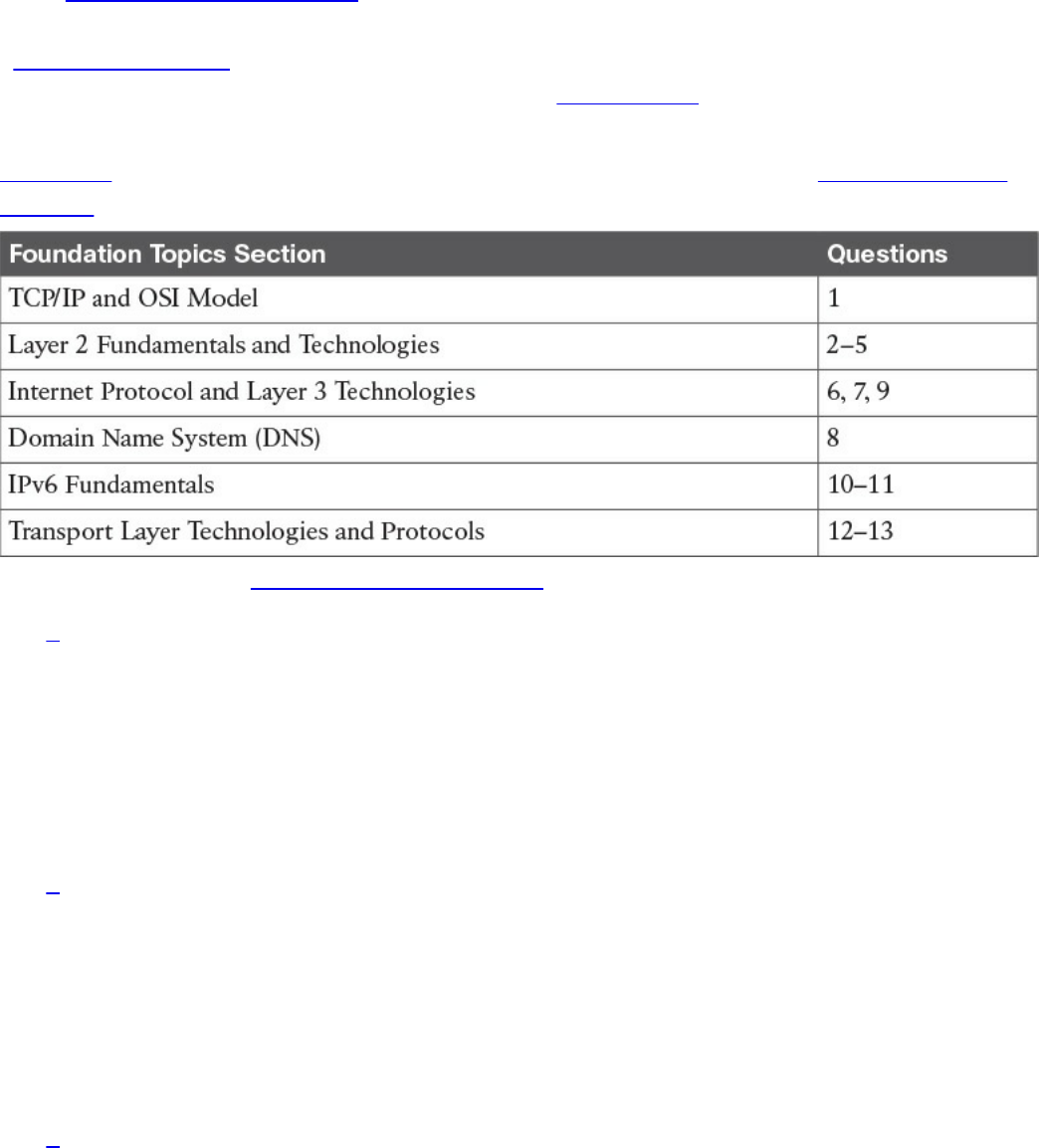

Table 1-1 outlines the major topics discussed in this chapter and the “Do I Know This

Already?” quiz questions that correspond to those topics.

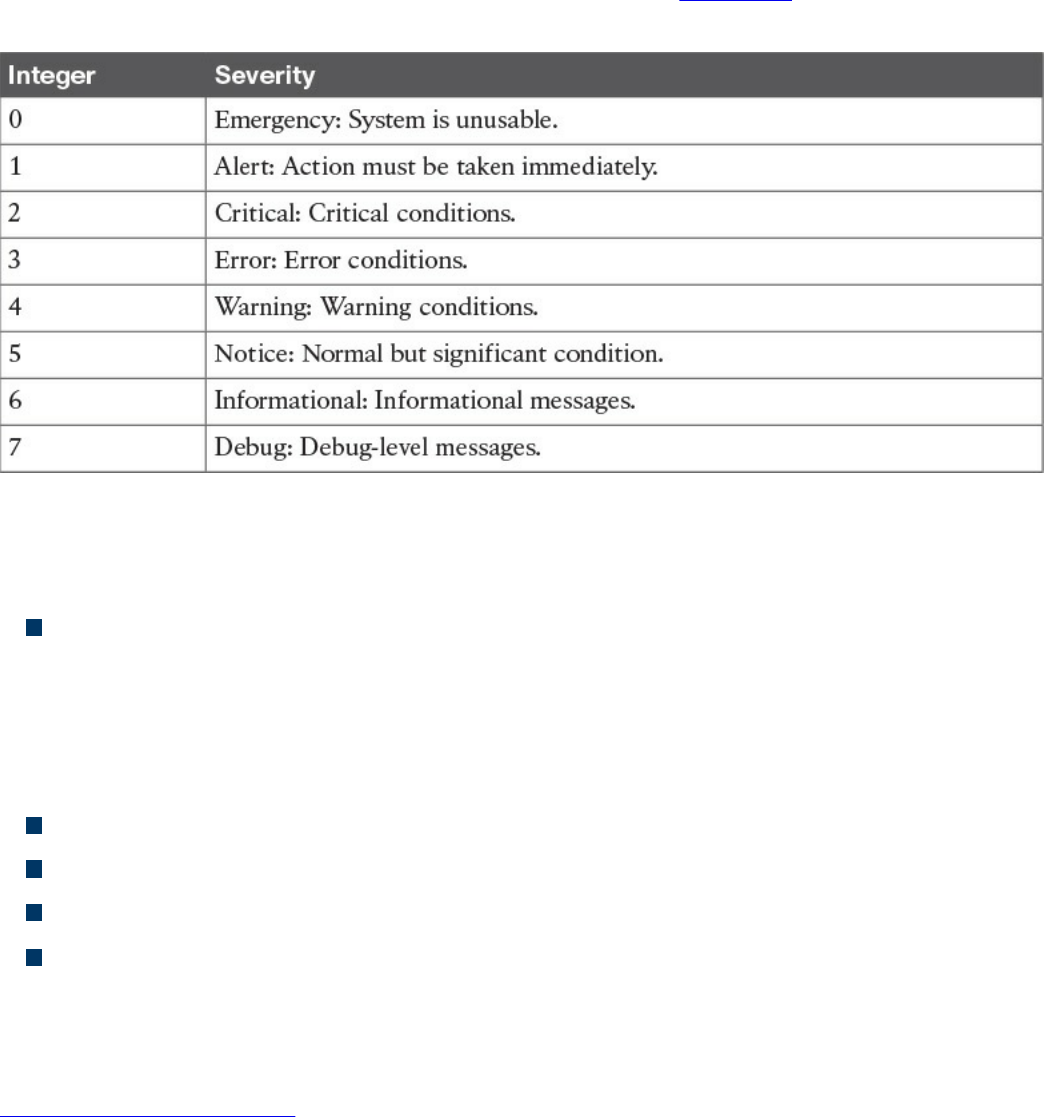

Table 1-1 “Do I Know This Already?” Section-to-Question Mapping

1. Which layer of the TCP/IP model is concerned with end-to-end communication

and offers multiplexing service?

a. Transport

b. Internet

c. Link layer

d. Application

2. Which statement is true concerning a link working in Ethernet half-duplex mode?

a. A collision cannot happen.

b. When a collision happens, the two stations immediately retransmit.

c. When a collision happens, the two stations wait for a random time before

retransmitting.

d. To avoid a collision, stations wait a random time before transmitting.

3. What is the main characteristic of a hub?

a. It regenerates the signal and retransmits on all ports.

b. It uses a MAC address table to switch frames.

c. When a packet arrives, the hub looks up the routing table before forwarding

www.hellodigi.ir

the packet.

d. It supports full-duplex mode of transmission.

4. Where is the information about ports and device Layer 2 addresses kept in a

switch?

a. MAC address table

b. Routing table

c. L2 address table

d. Port table

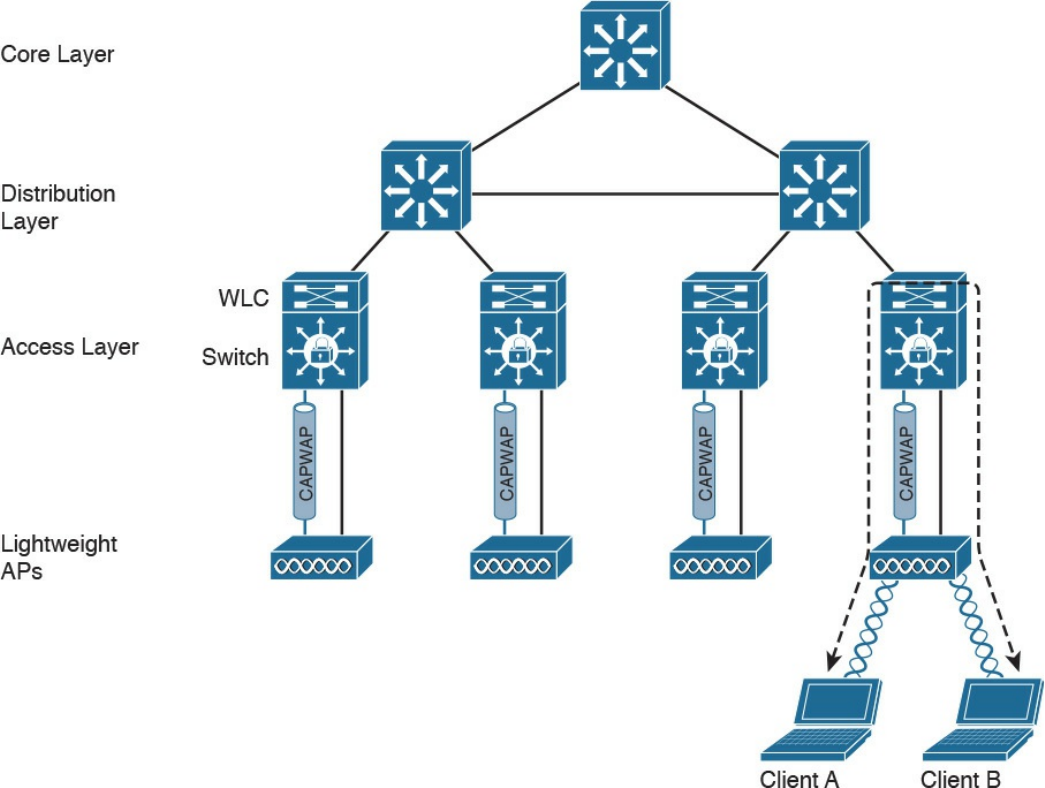

5. Which of the following features are implemented by a wireless LAN controller?

(Select all that apply.)

a. Wireless station authentication

b. Quality of Service

c. Channel encryption

d. Transmission and reception of frames

6. Which IP header field is used to recognize fragments from the same packet?

a. Identification

b. Fragment Offset

c. Flags

d. Destination Address

7. Which protocol is used to request a host MAC address given a known IP

address?

a. ARP

b. DHCP

c. ARPv6

d. DNS

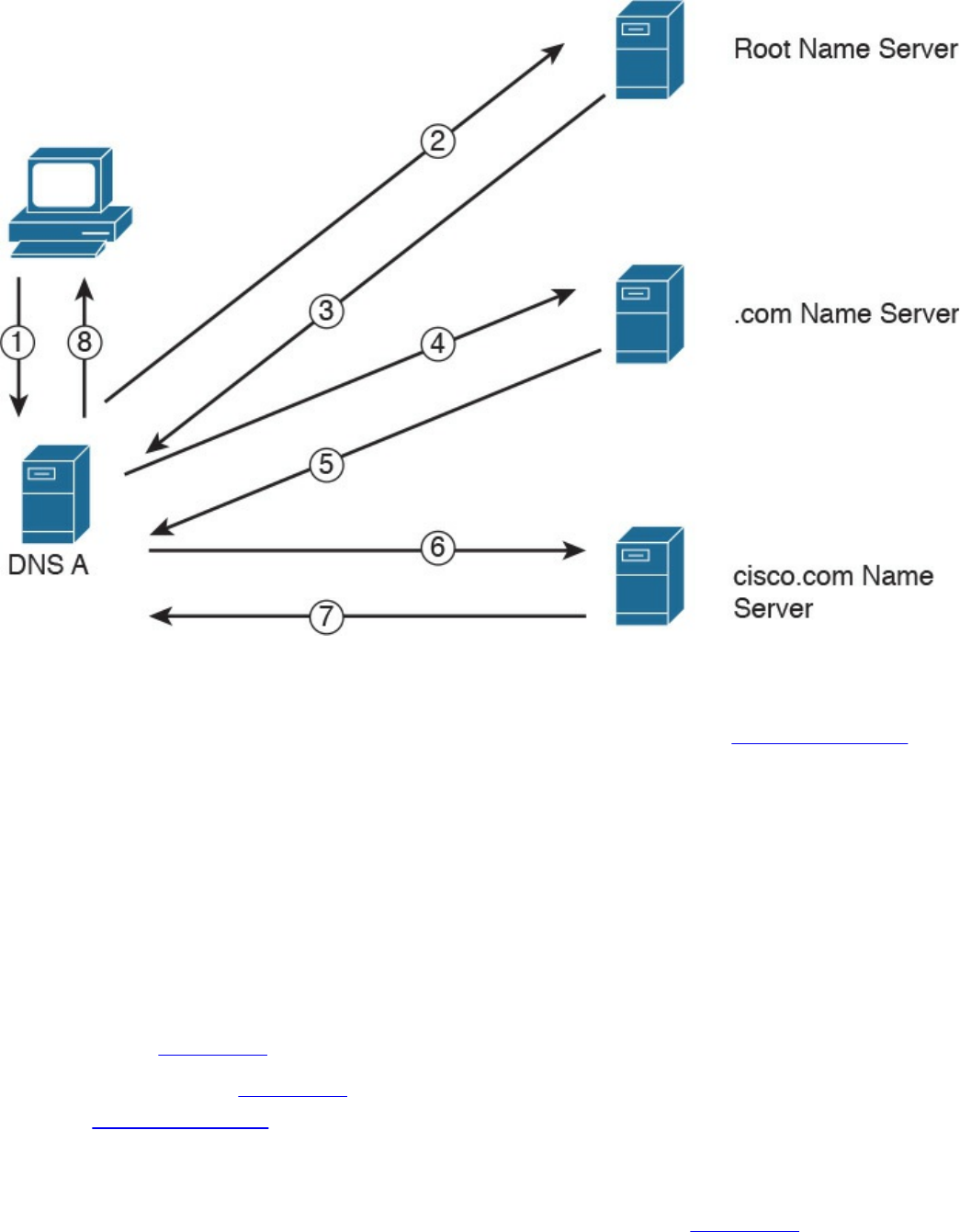

8. Which type of query is sent from a DNS resolver to a DNS server?

a. Recursive

b. Iterative

c. Simple

d. Type Q query

9. How many host IPv4 addresses are possible in a /25 network?

a. 126

www.hellodigi.ir

b. 128

c. 254

d. 192

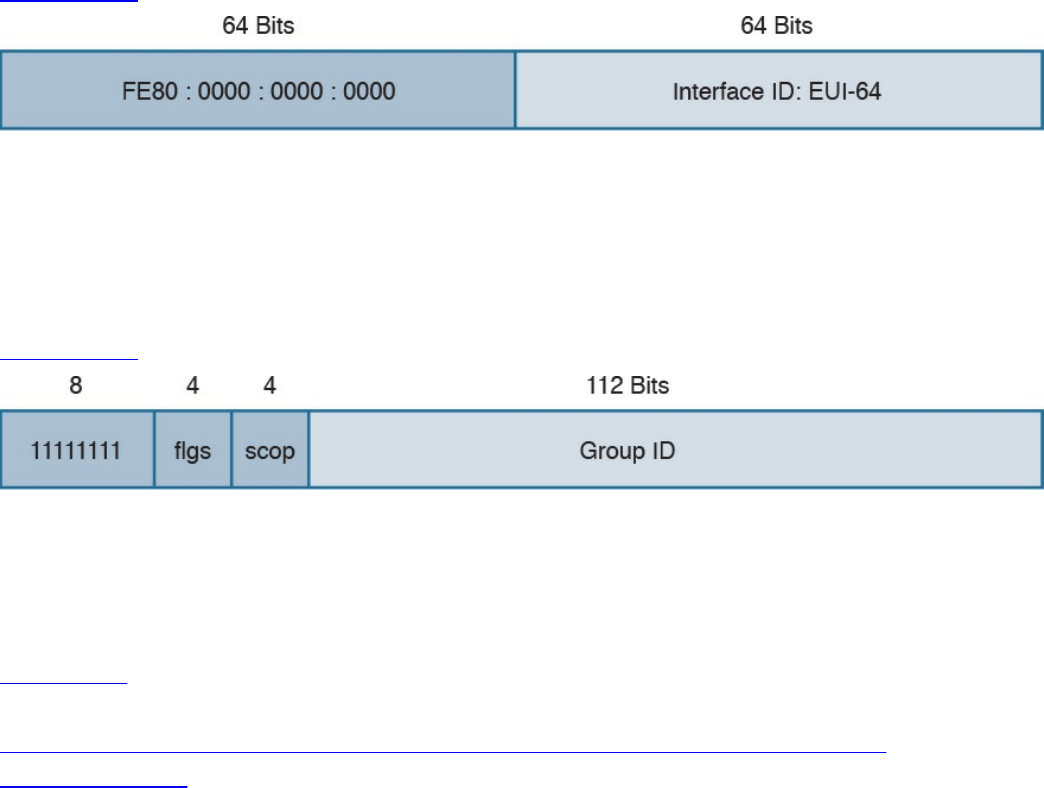

10. How many bits can be used for host IPv6 addresses assignment in the 2345::/64

network?

a. 48

b. 64

c. 16

d. 264

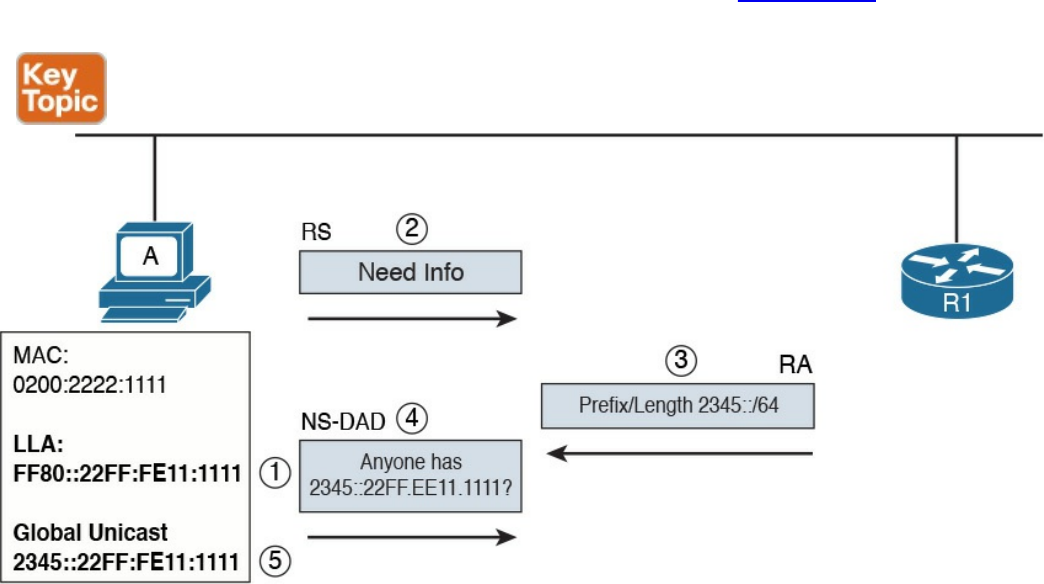

11. What is SLAAC used for?

a. To provide an IPv6 address to a client

b. To route IPv6 packets

c. To assign a DNS server

d. To provide a MAC address given an IP address

12. Which one of these protocols requires a connection to be established before

transmitting data?

a. TCP

b. UDP

c. IP

d. OSPF

13. What is the TCP window field used for?

a. Error detection

b. Flow control

c. Fragmentation

d. Multiplexing

Foundation Topics

TCP/IP and OSI Model

Two main models are currently used to explain the operation of an IP-based network.

These are the TCP/IP model and the Open System Interconnection (OSI) model. This

section provides an overview of these two models.

www.hellodigi.ir

TCP/IP Model

The TCP/IP model is the foundation for most of the modern communication networks.

Every day, each of us uses some application based on the TCP/IP model to

communicate. Think, for example, about a task we consider simple: browsing a web

page. That simple action would not be possible without the TCP/IP model.

The TCP/IP model’s name includes the two main protocols we will discuss in the

course of this chapter: Transmission Control Protocol (TCP) and Internet Protocol (IP).

However, the model goes beyond these two protocols and defines a layered approach

that can map nearly any protocol used in today’s communication.

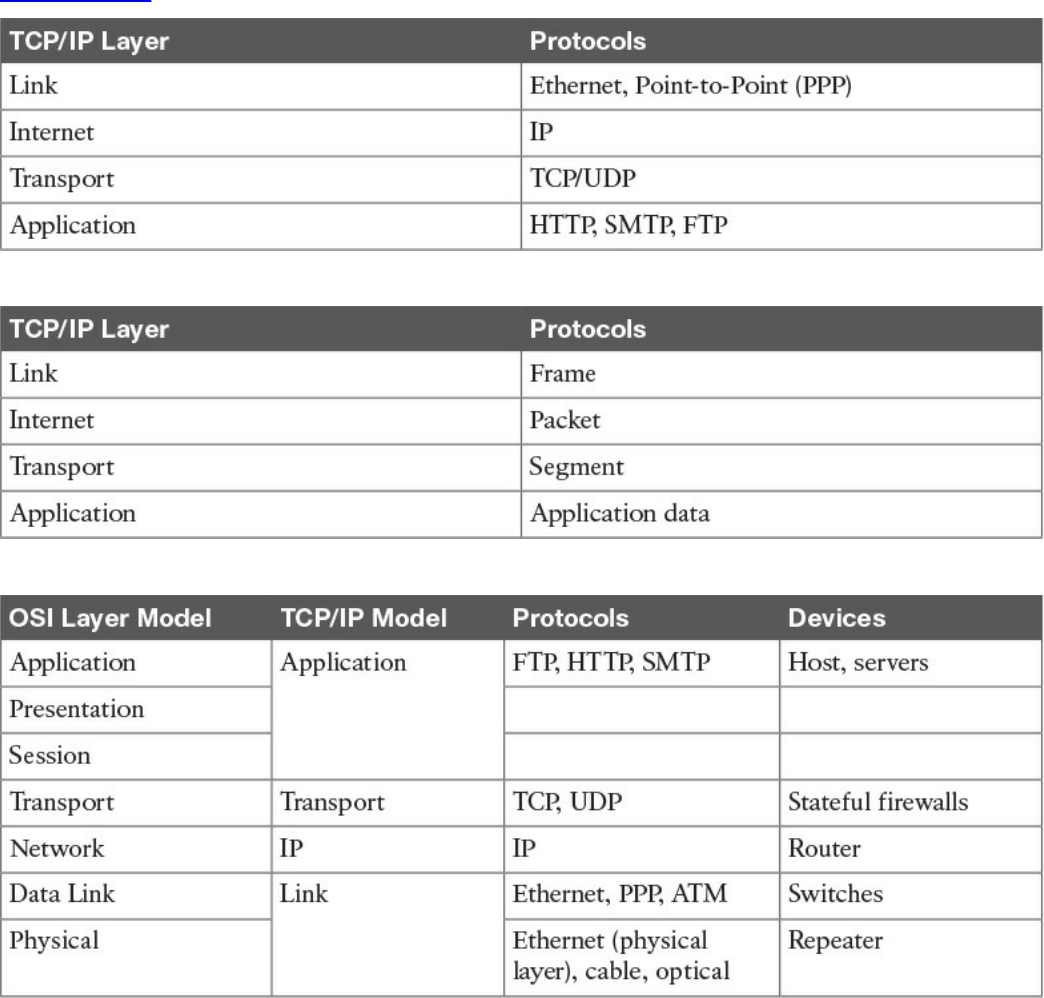

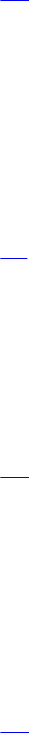

In its original definition, the TCP/IP model included four layers, where each of the

layers would provide transmission and other services for the level above it. These are

the link layer, internet layer, transport layer, and application layer.

In its most modern definition, the link layer is split into two additional layers to clearly

demark the physical and data link type of services and protocols included in this layer.

Internet layer is also sometimes called the networking layer, which is based on another

very known model, the OSI model, which is described in the next section. Figure 1-1

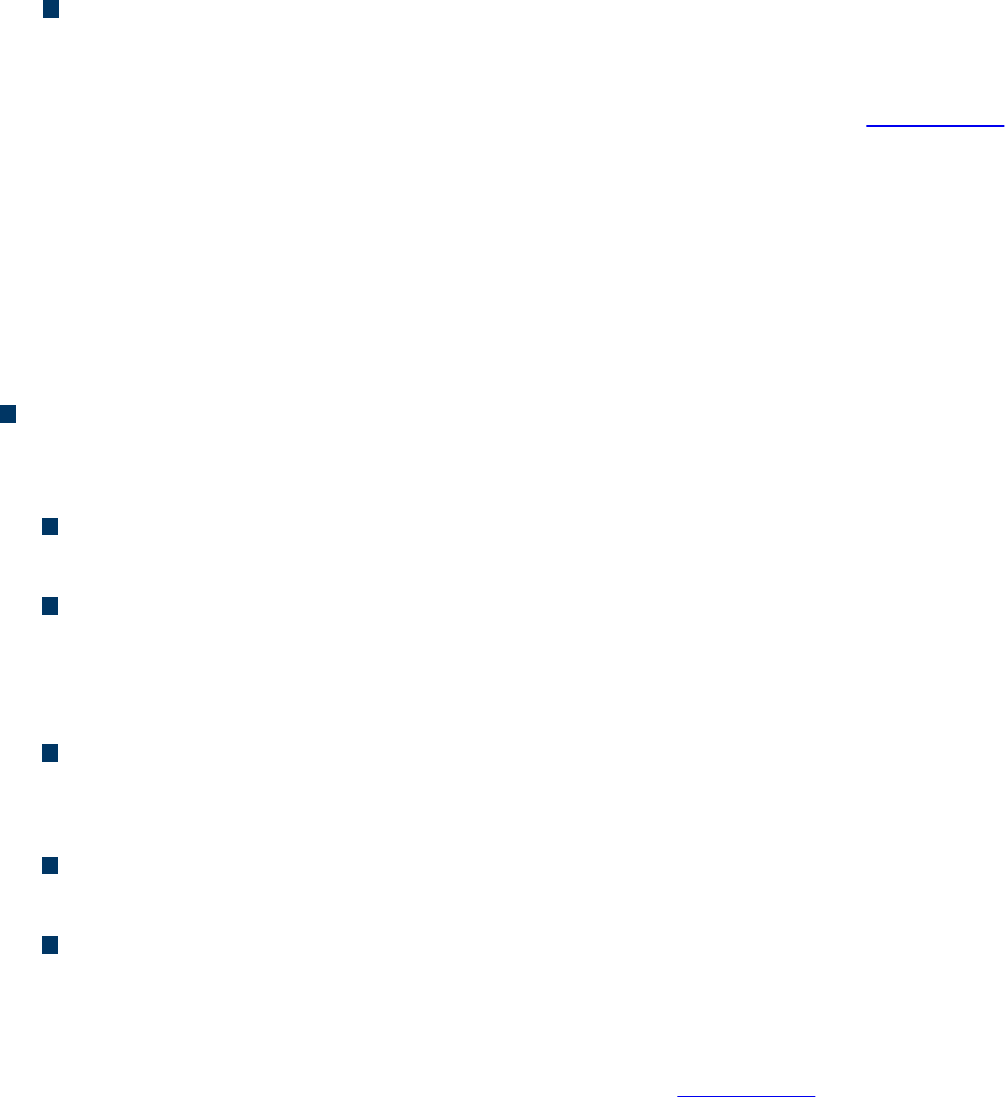

shows the TCP/IP stack model.

www.hellodigi.ir

Figure 1-1 TCP/IP Stack Model

The TCP/IP model works on two main concepts that define how the layers interact:

On the same host, each layer works by providing services for the layer above it on

the TCP/IP stack.

On different hosts, a same layer communication is established by using the same

layer protocol.

For example, on your personal computer, the TCP/IP stack is implemented to allow

networking communication. The link layer provides services for the IP layer (for

example, encapsulation of an IP packet in an Ethernet frame). The IP layer provides

services to the transport layer (for example, IP routing and IP addressing), and so on.

These are all examples of services provided to the layer above it within the host.

Now imagine that your personal computer wants to connect to a web server (for

example, to browse a web page). The web server will also implement the TCP/IP stack.

In this case, the IP layer of your personal computer and the IP layer of the web server

will use a common protocol, IP, for the communication. The same thing will happen

with the transport protocol, where the two devices will use TCP, and so on. These are

www.hellodigi.ir

examples of the same layer protocol used on different hosts to communicate.

Later in this chapter, the “Networking Communication with the TCP/IP Model,” section

provides more detail about how the communication works between two hosts and how

the TCP/IP stack is used on the same host.

The list that follows analyzes each layer in a bit more detail:

Link layer: The link layer provides physical transmission support and includes the

protocols used to transmit information over a link between two devices. In simple

terms, the link layer includes the hardware and protocol necessary to send

information between two hosts that are connected by a physical link (for example, a

cable) or over the air (for example, via radio waves). It also includes the notion of

and mechanisms for information being replicated and retransmitted over several

ports or links by dedicated devices such as switches and bridges.

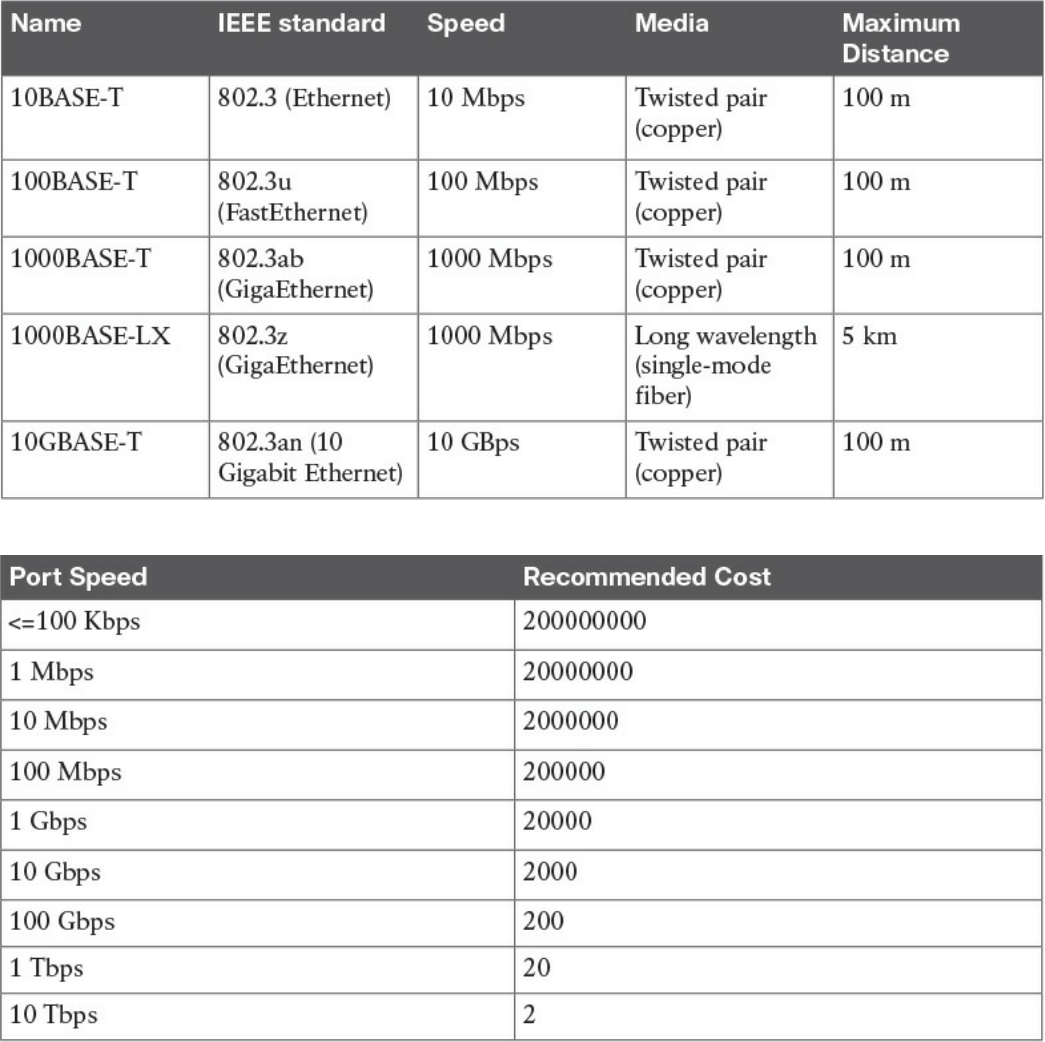

Because different physical means are used to transmit information, there are several

protocols that work at the link layer. One of the most popular is the Ethernet

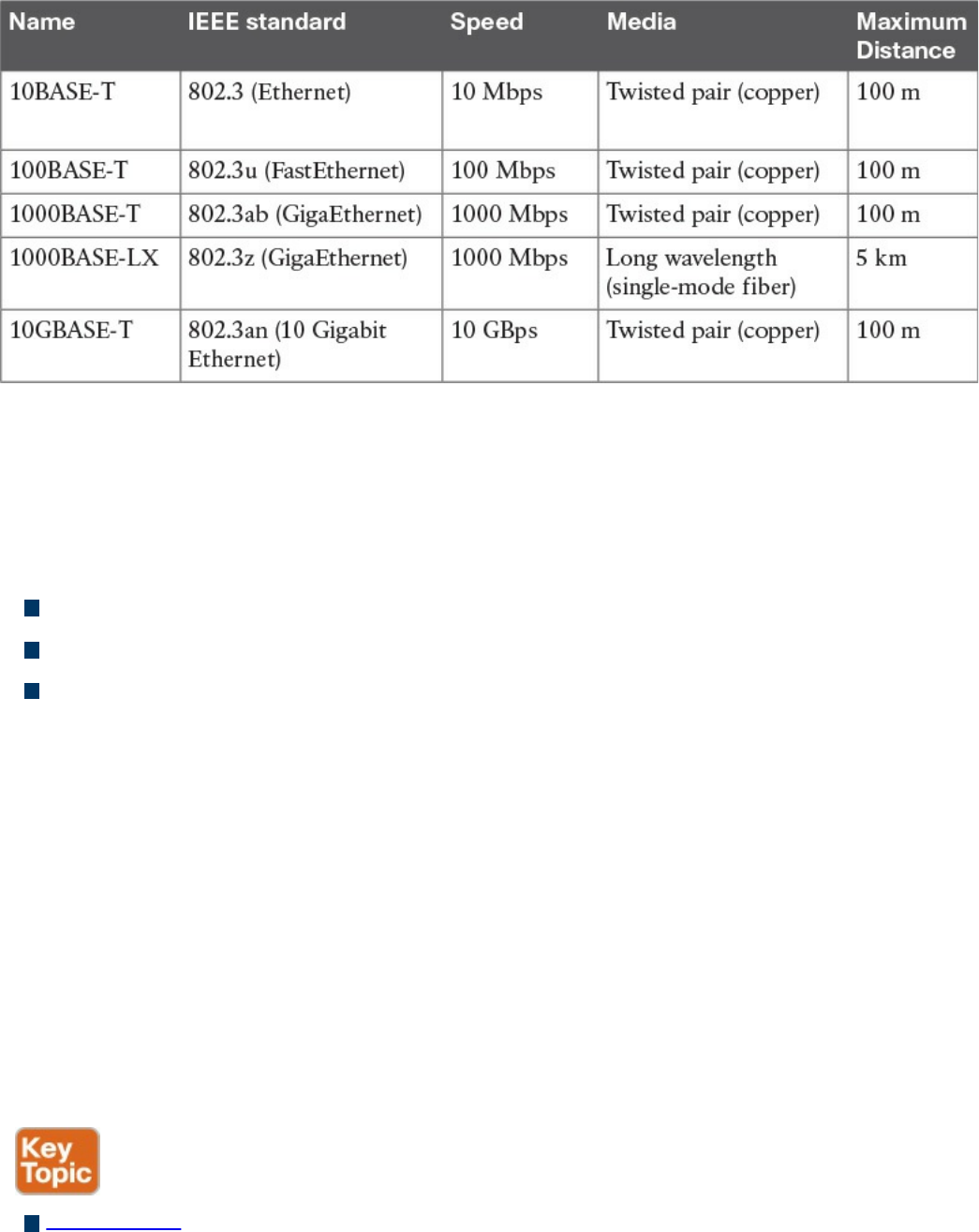

protocol. As mentioned earlier, nowadays the link layer is usually split further in

the physical layer, which is concerned about physical bit transmission, and the data

link layer, which provides encapsulation and addressing facilities as well as

abstraction for the upper layers.

At link layer, the message unit is called a frame.

Internet layer: Of course, not all devices can be directly connected to each other,

so there is a need to transmit the information across multiple devices. The Internet

layer provides networking services and includes protocols that allow for the

transmission of information through multiple hops. To do that, each host is identified

by an Internet Protocol (IP) address, or a different address if another Internet

Protocol type is used. Each hop device between two hosts, called networking

nodes, knows how to reach the destination IP address and transmit the information

to the next best node to reach the destination. The nodes are said to perform the

routing of the information, and the way each node, also called router, determines the

best next node to the destination is called the routing protocol.

At the Internet layer, the message unit is called a packet.

Transport layer: When transmitting information, the sending host knows when the

information is sent, but has no way to know whether it actually made it to the

destination. The transport layer provides services to successfully transfer

information between two end points. It abstracts the lower-level layer and is

concerned about the end-to-end process. For example, it is used to detect whether

any part of the information went missing. It also provides information about which

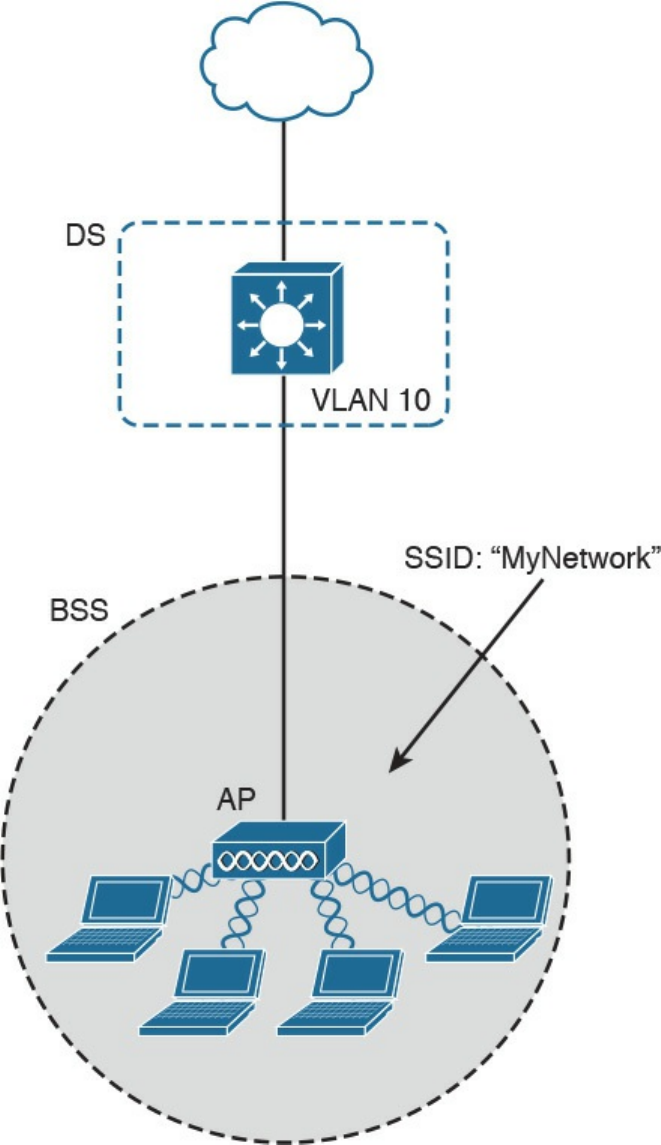

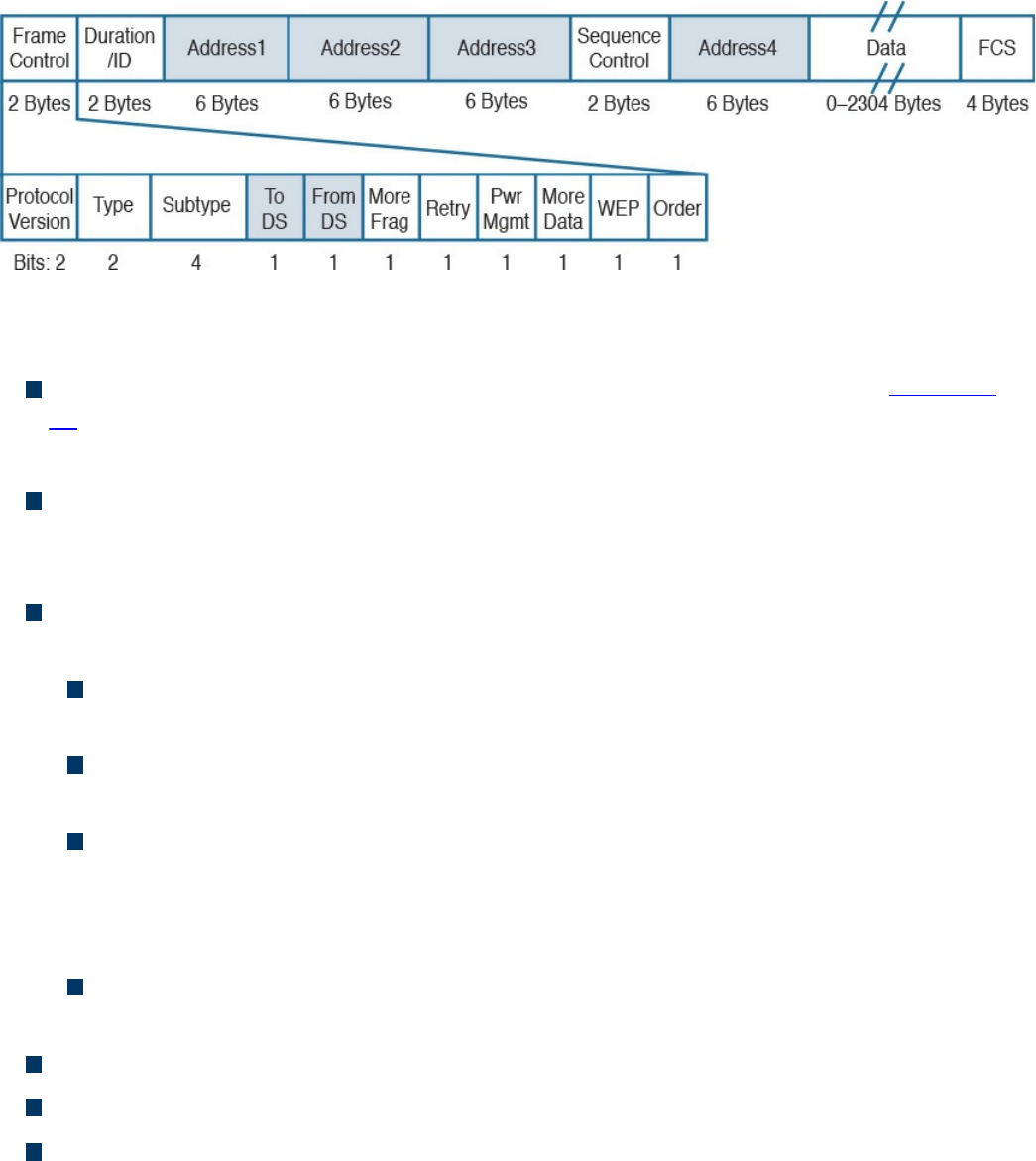

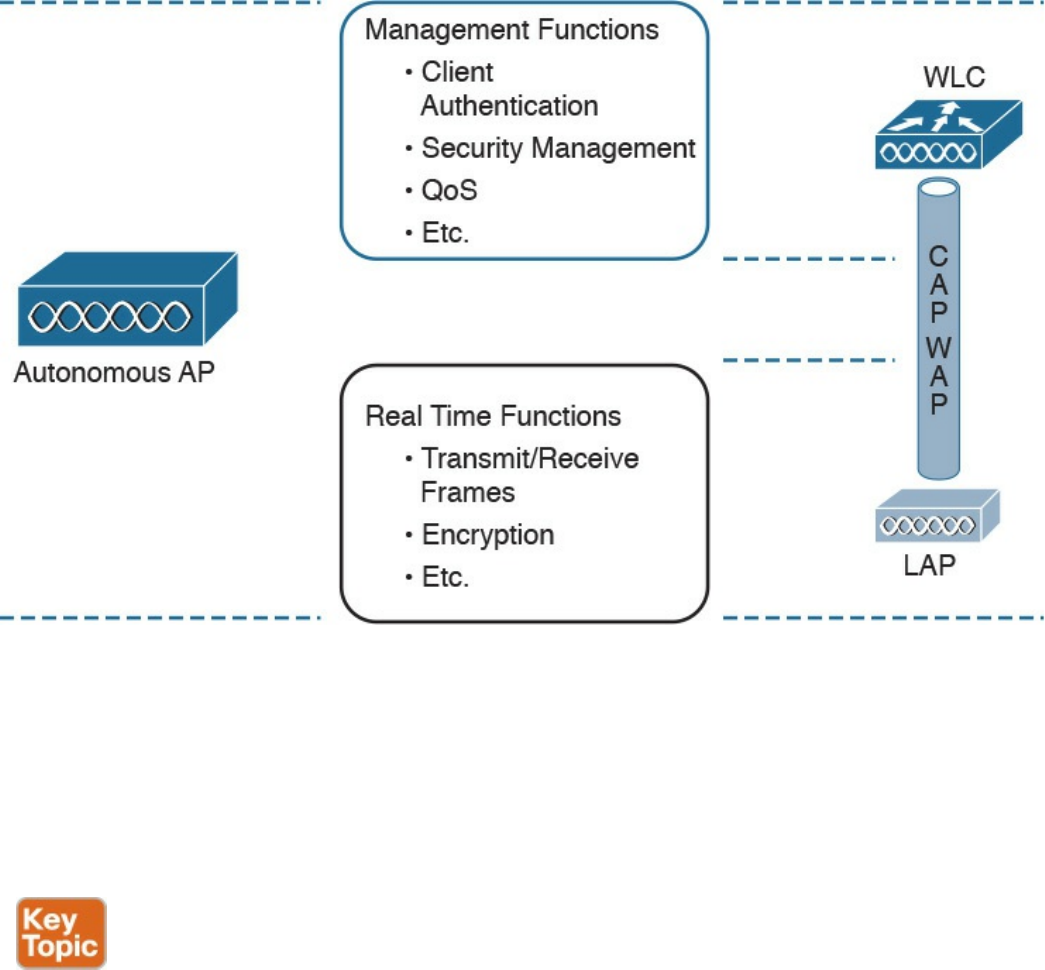

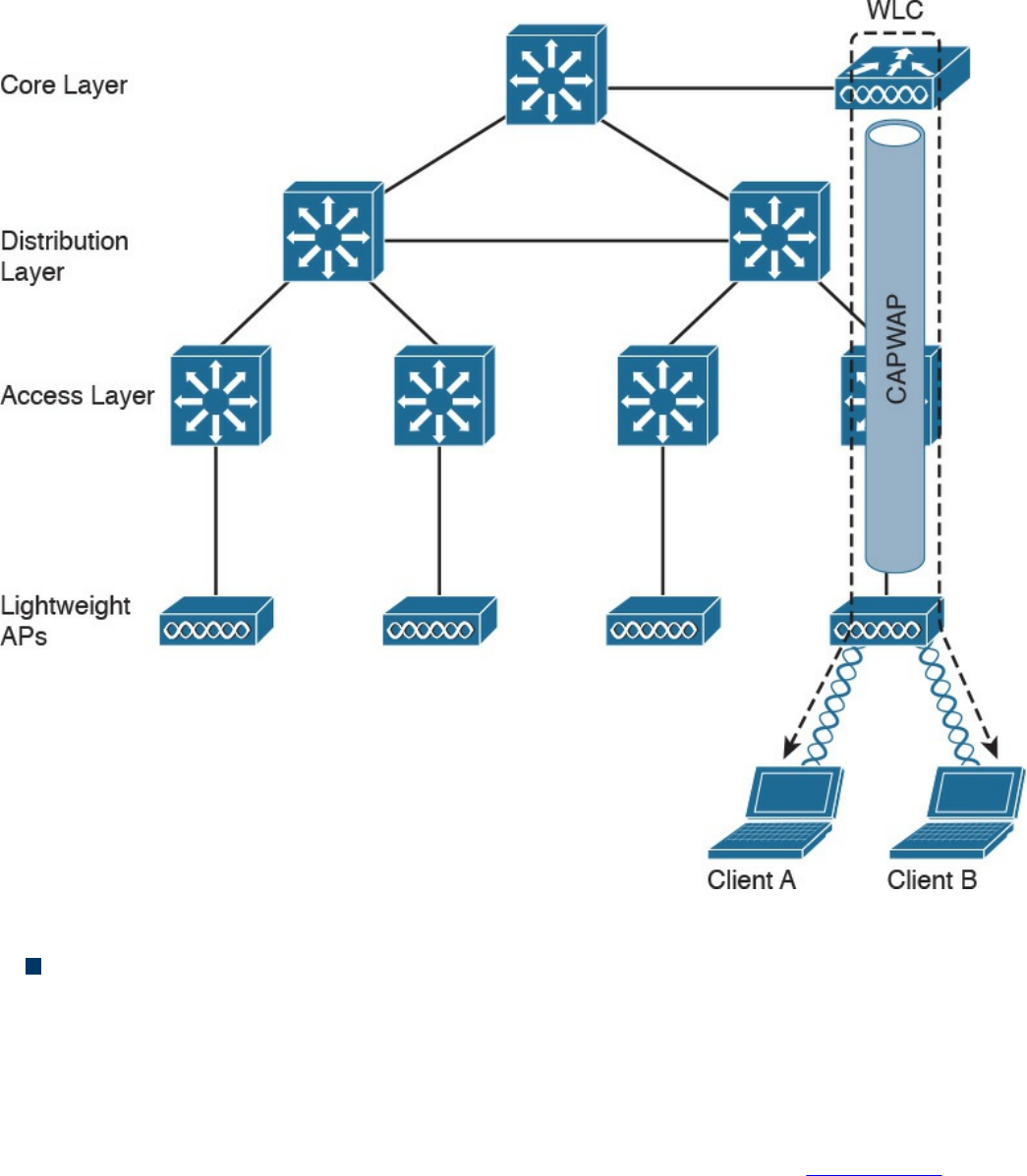

www.hellodigi.ir

type of information is being transmitted. For example, a host may want to request a

web page and also start an FTP transaction. How do we distinguish between these

two actions? The transport layer helps to separate the two requests by using the

concept of a transport layer port. Each service is enabled on a different transport

layer port—for example, port 80 for a web request or port 21 for an FTP

transaction. So when the destination host receives a request on port 80, it knows

that this needs to be passed to the application layer handling web requests. This

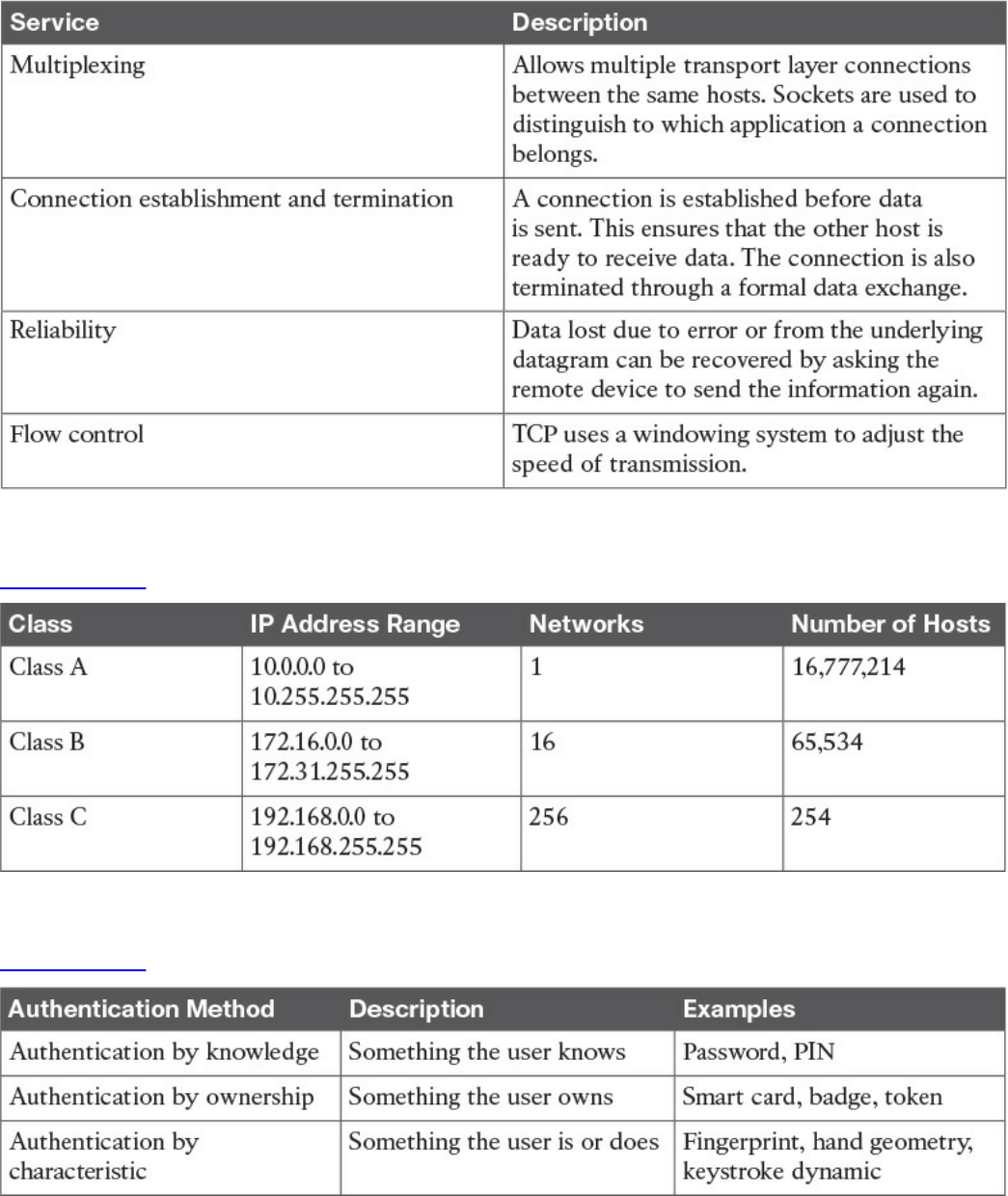

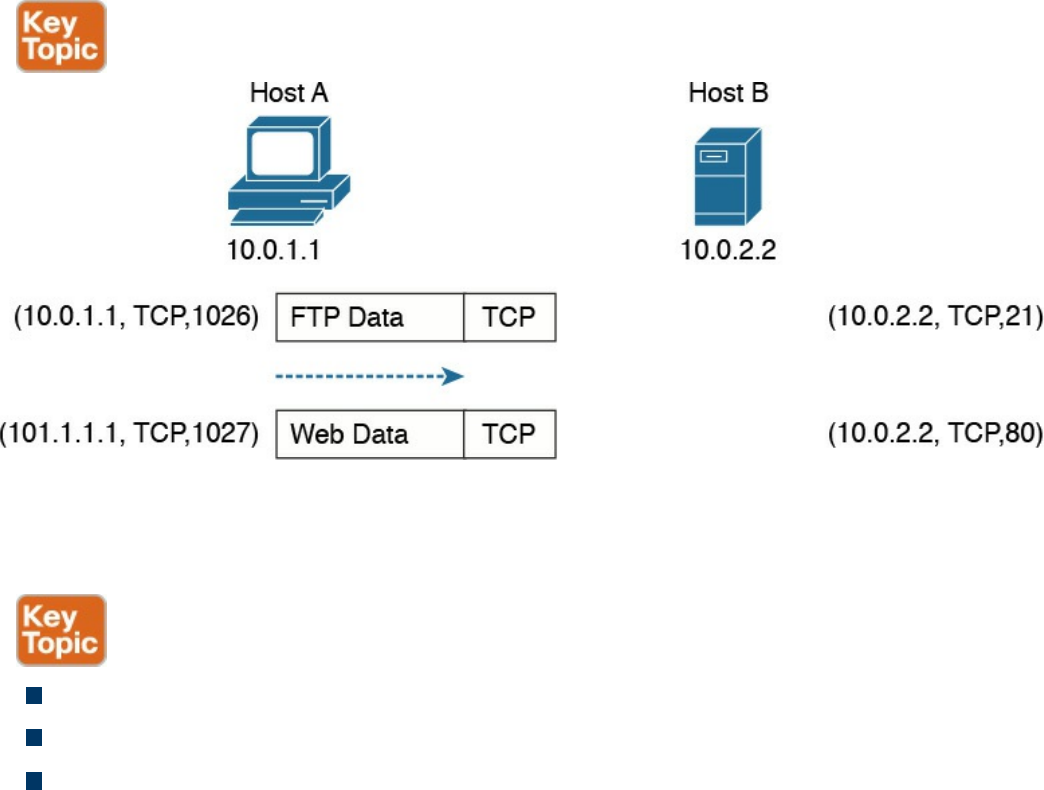

type of service provided by the transport layer is called multiplexing.

At this layer, the message unit is called a segment.

Application layer: The application layer is the top layer and is the one most

familiar to end users. For example, at the application layer, a user may use the

email client to send an email message or use a web browser to browse a website.

Both of these actions map to a specific application, which uses a protocol to fulfill

the service.

In this example, the Simple Message Transfer Protocol (SMTP) is used to handle

the email transfer, whereas the Hypertext Transfer Protocol (HTTP) is used to

request a web page within a browser. At this level, the protocols are not concerned

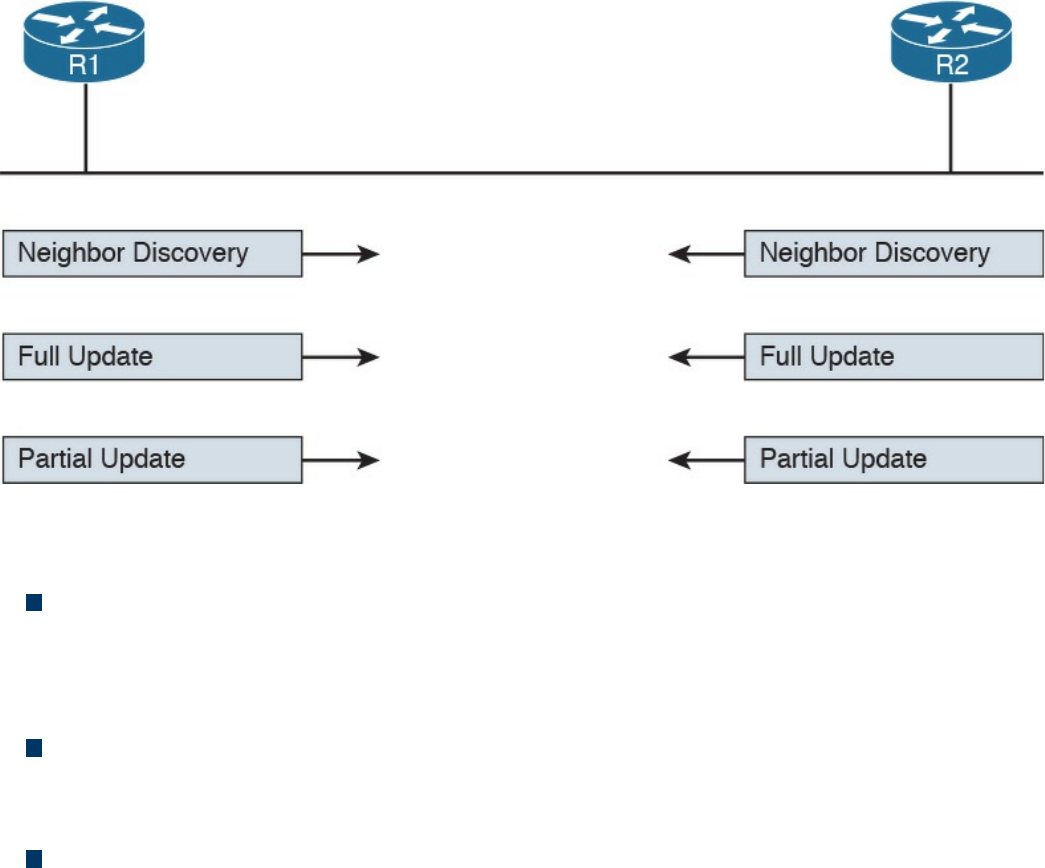

with how the information will reach the destination, but only work on defining the

content of the information being transmitted.

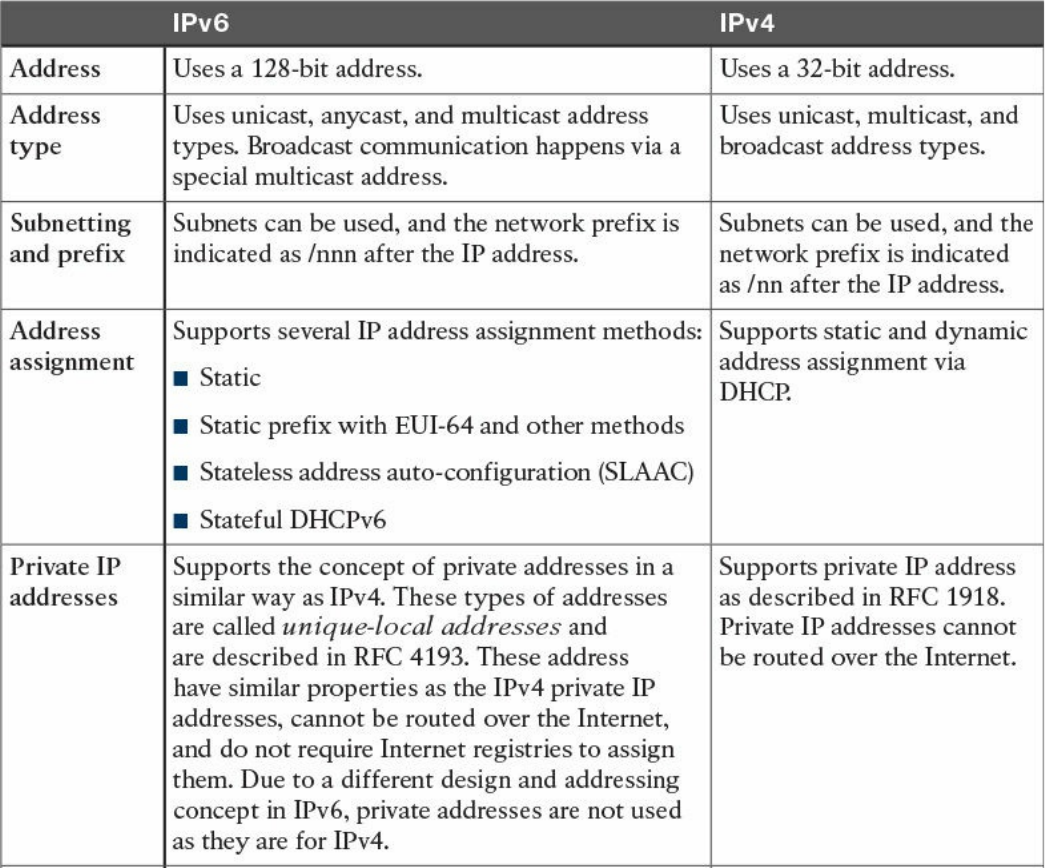

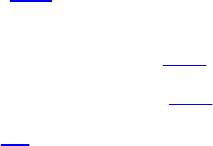

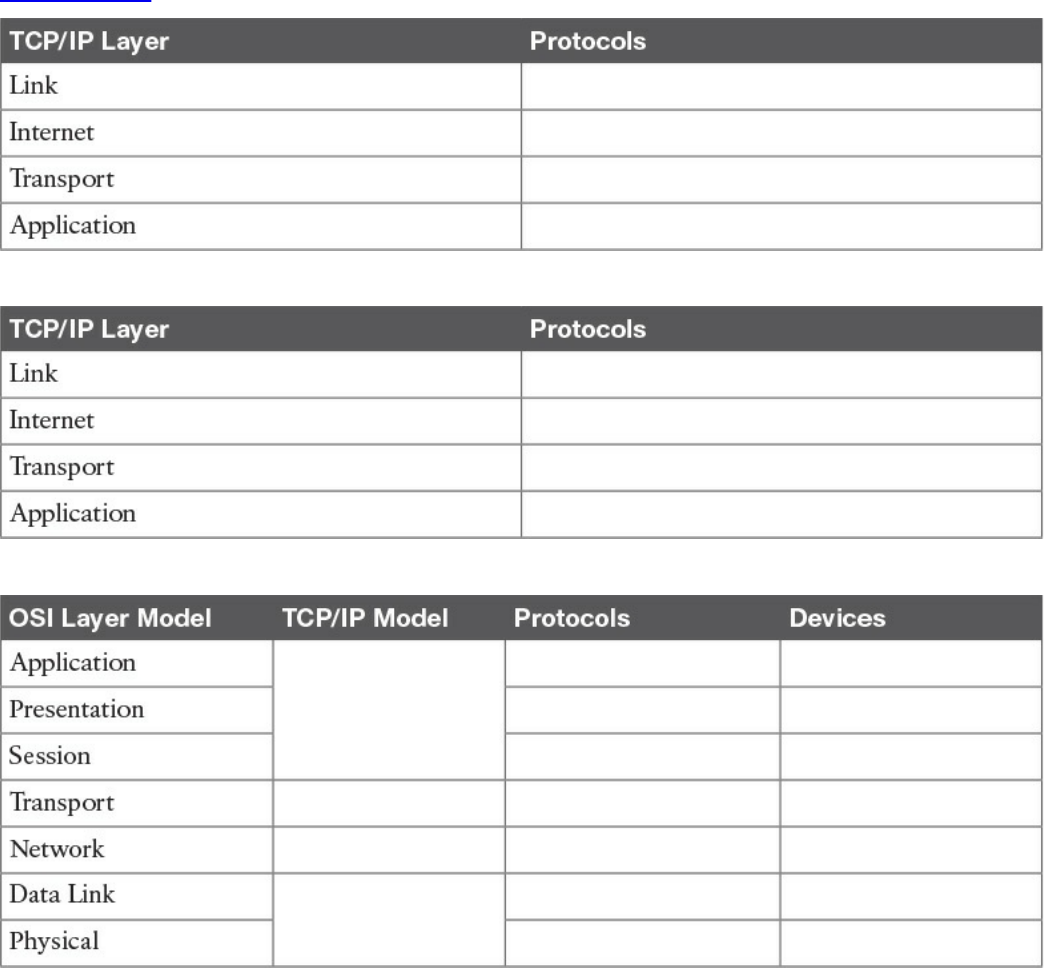

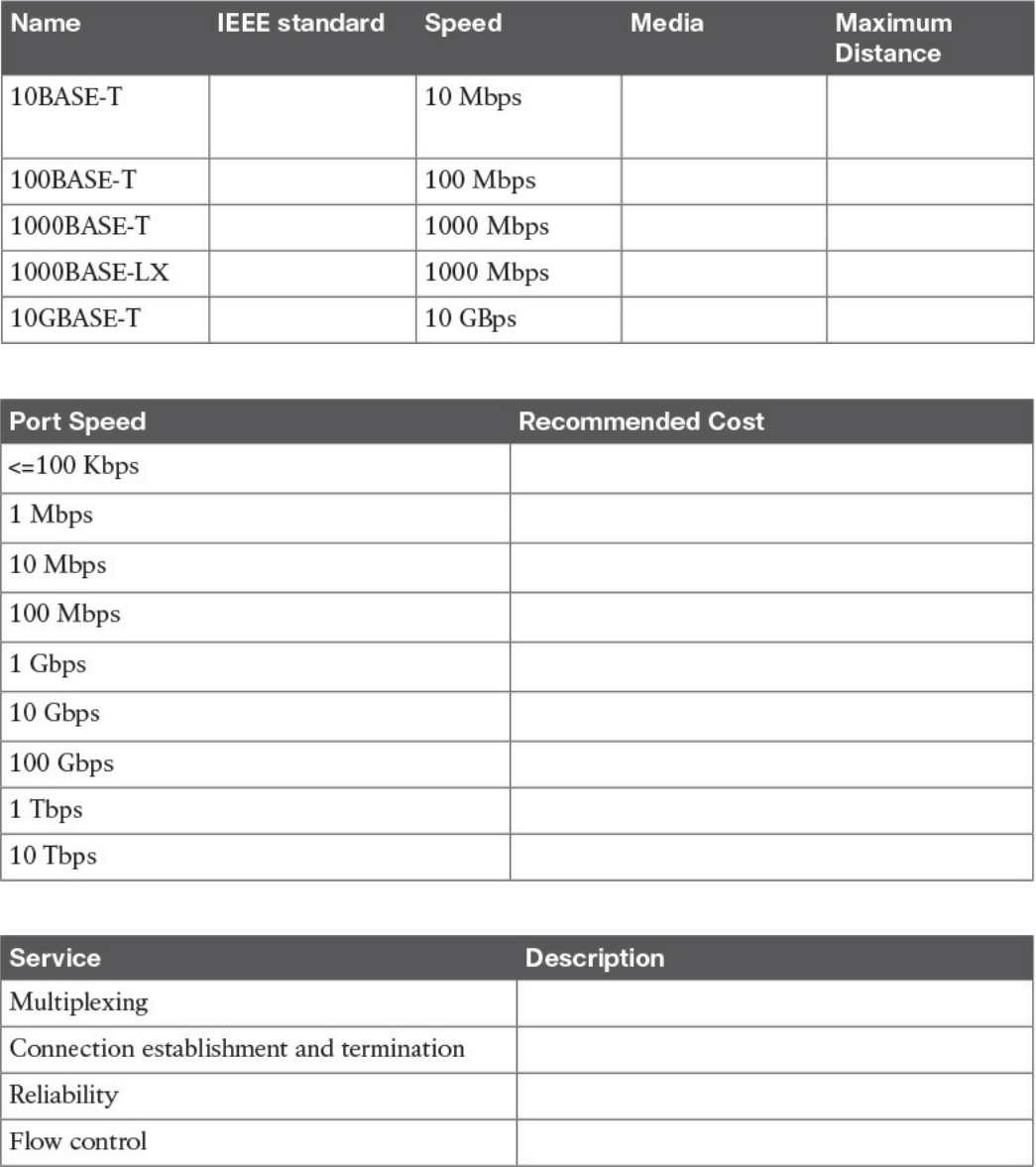

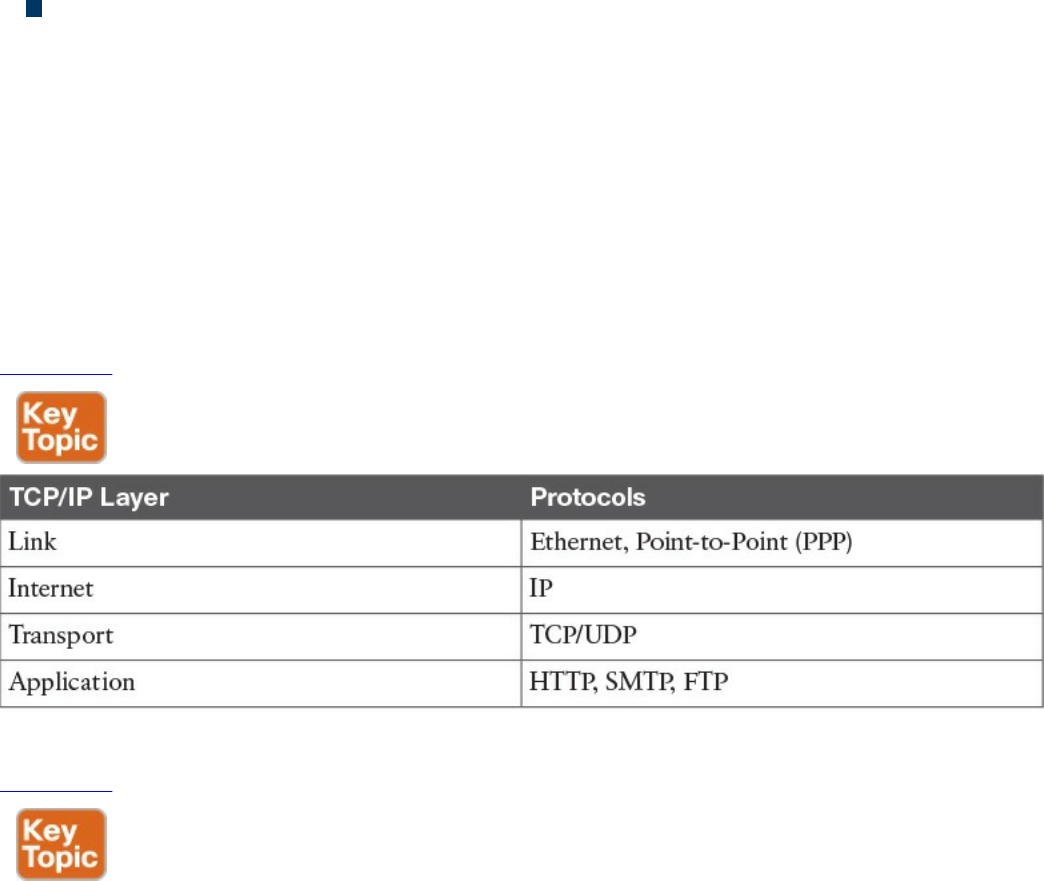

Table 1-2 shows examples of protocols working at each layer of the TCP/IP model.

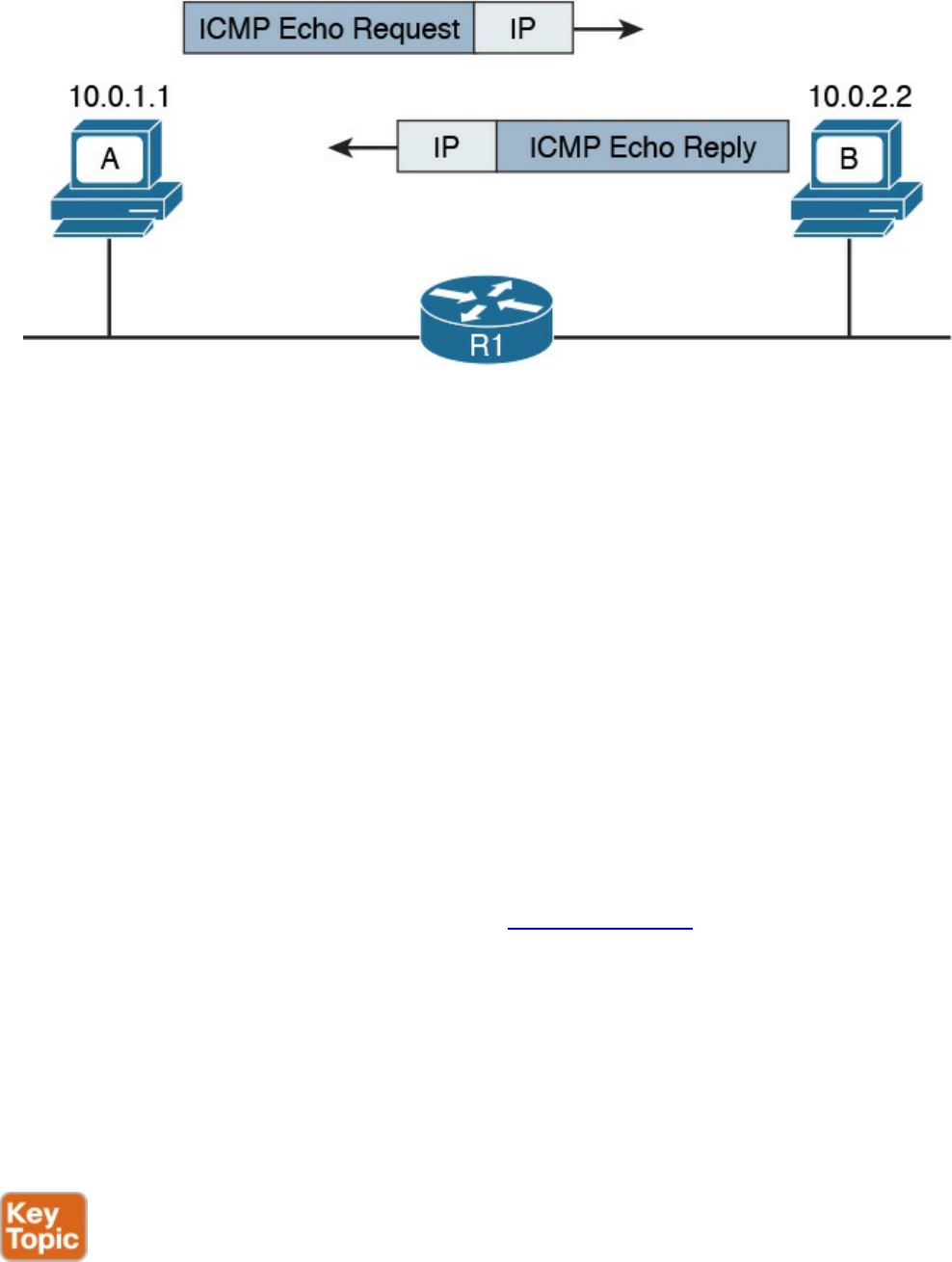

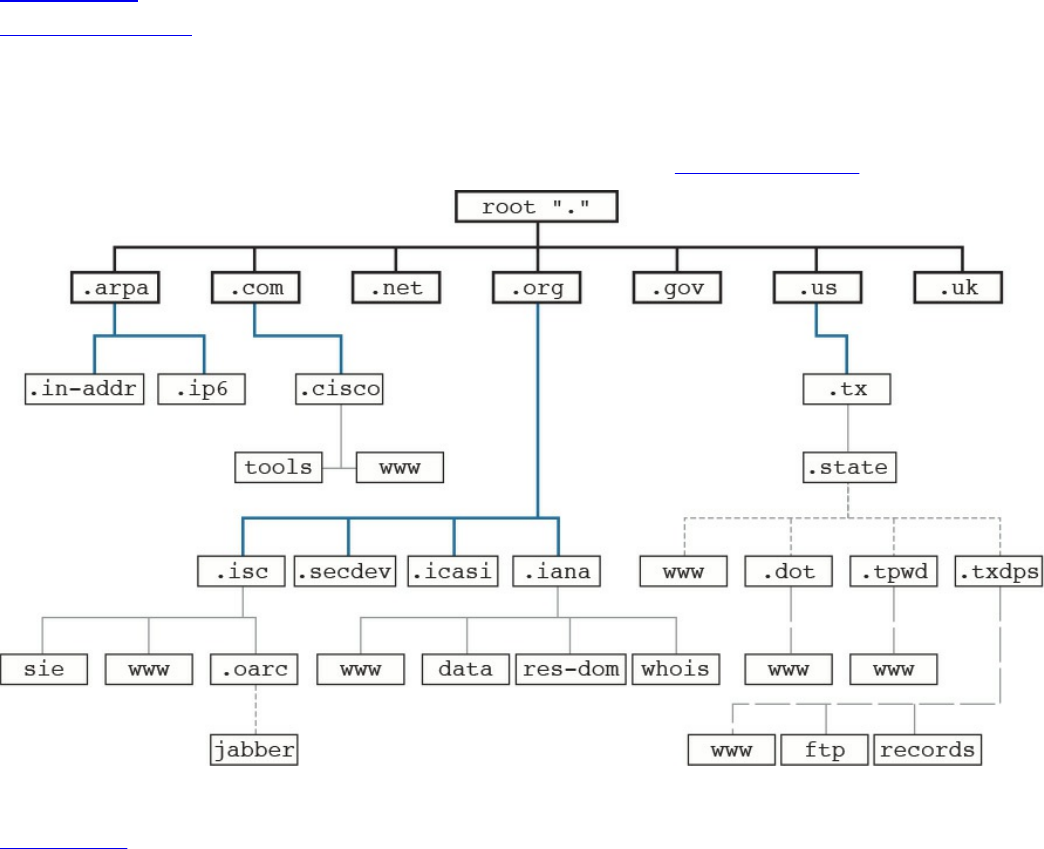

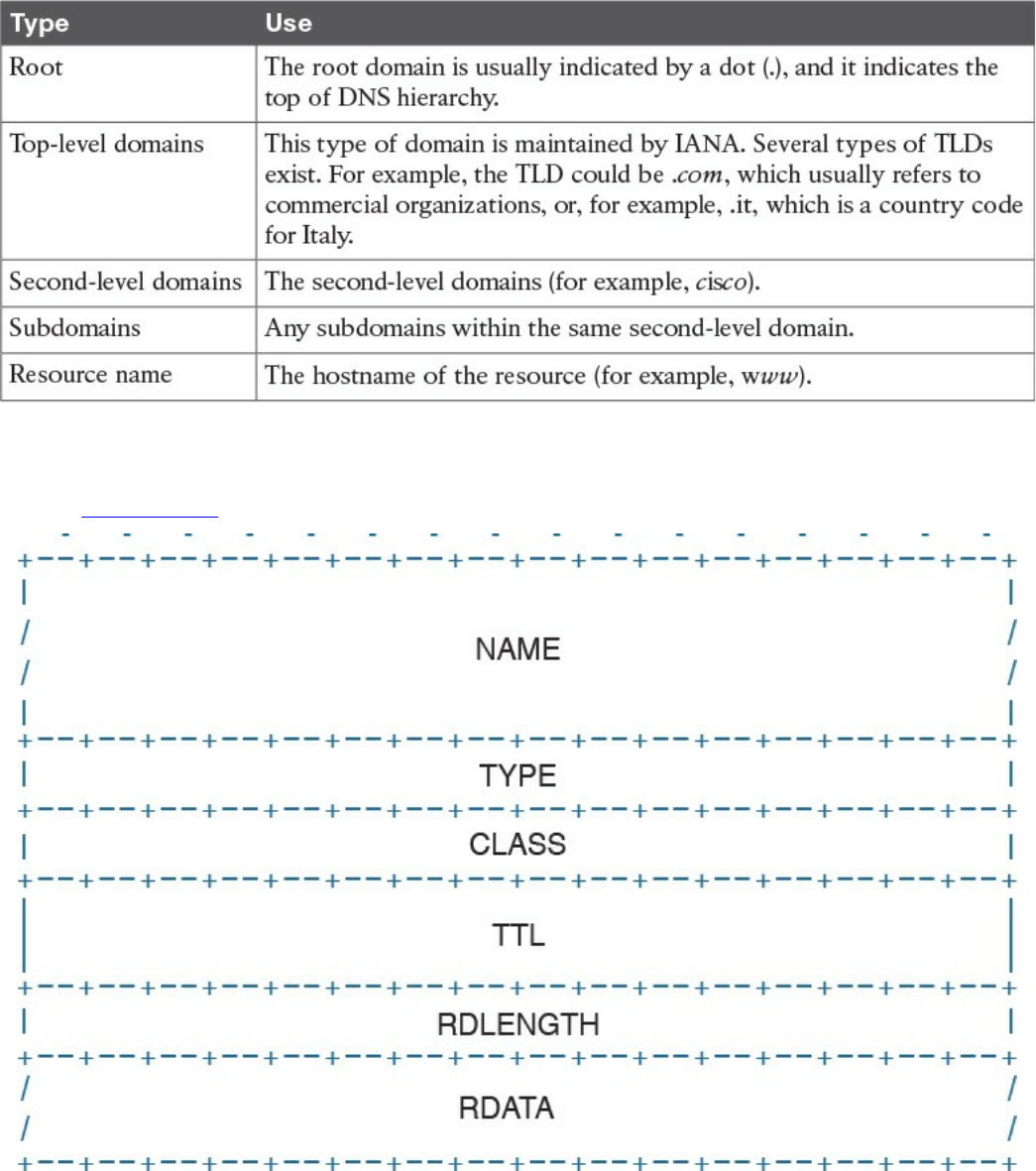

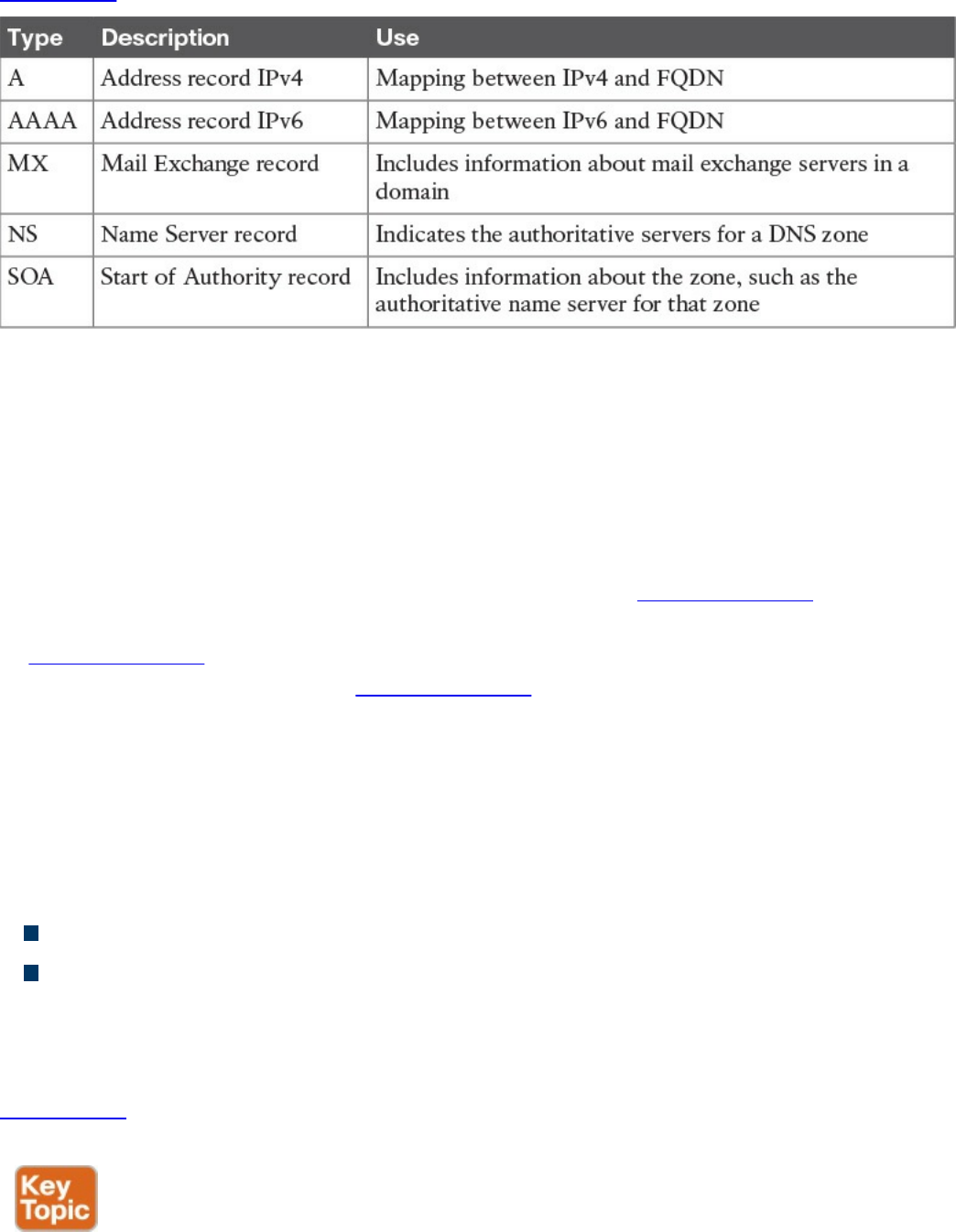

Table 1-2 Protocols at Each Layer of the TCP/IP Model

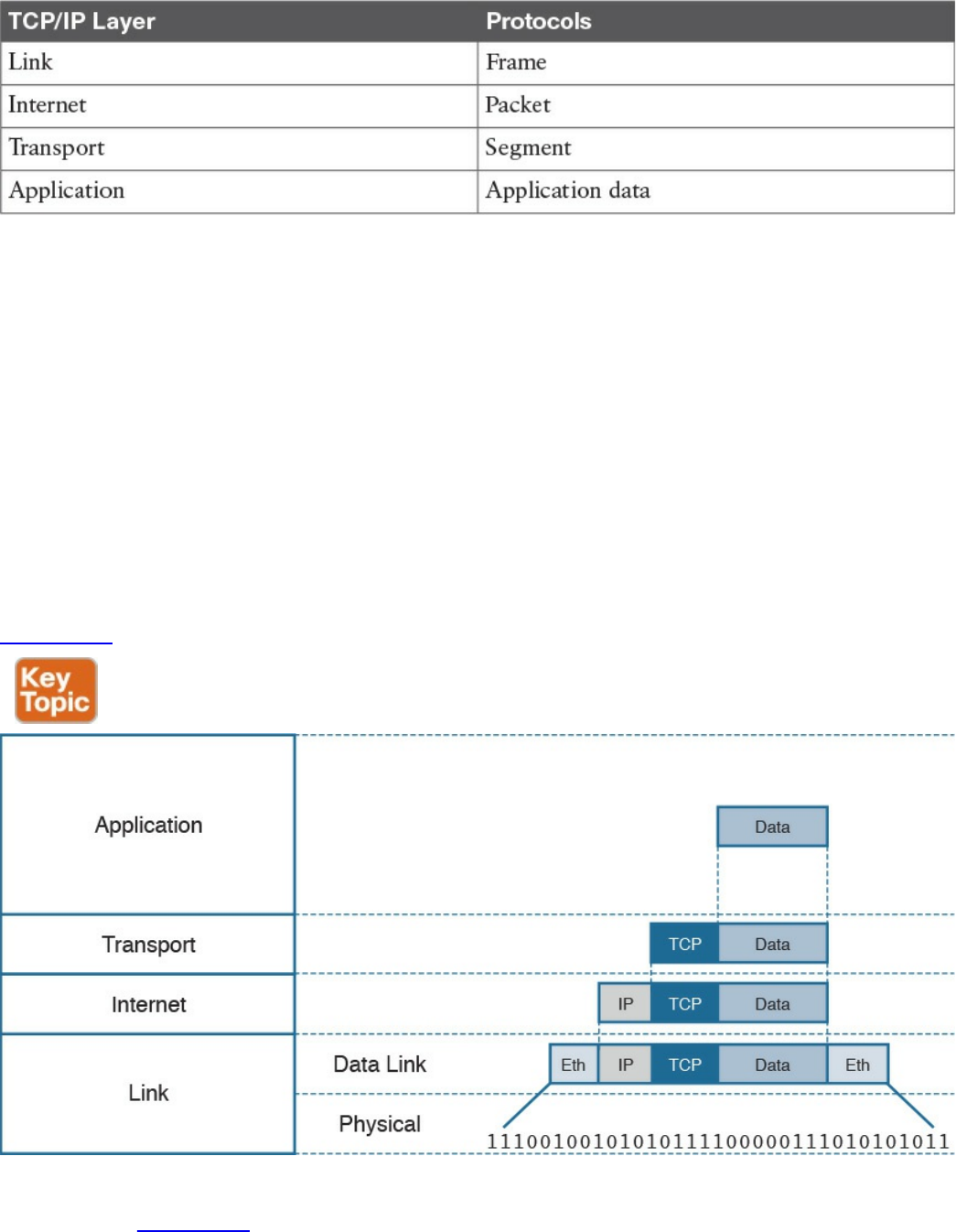

Table 1-3 summarizes what message units are referred to as at each layer.

www.hellodigi.ir

Table 1-3 Message Unit Naming at Each Layer of the TCP/IP Model

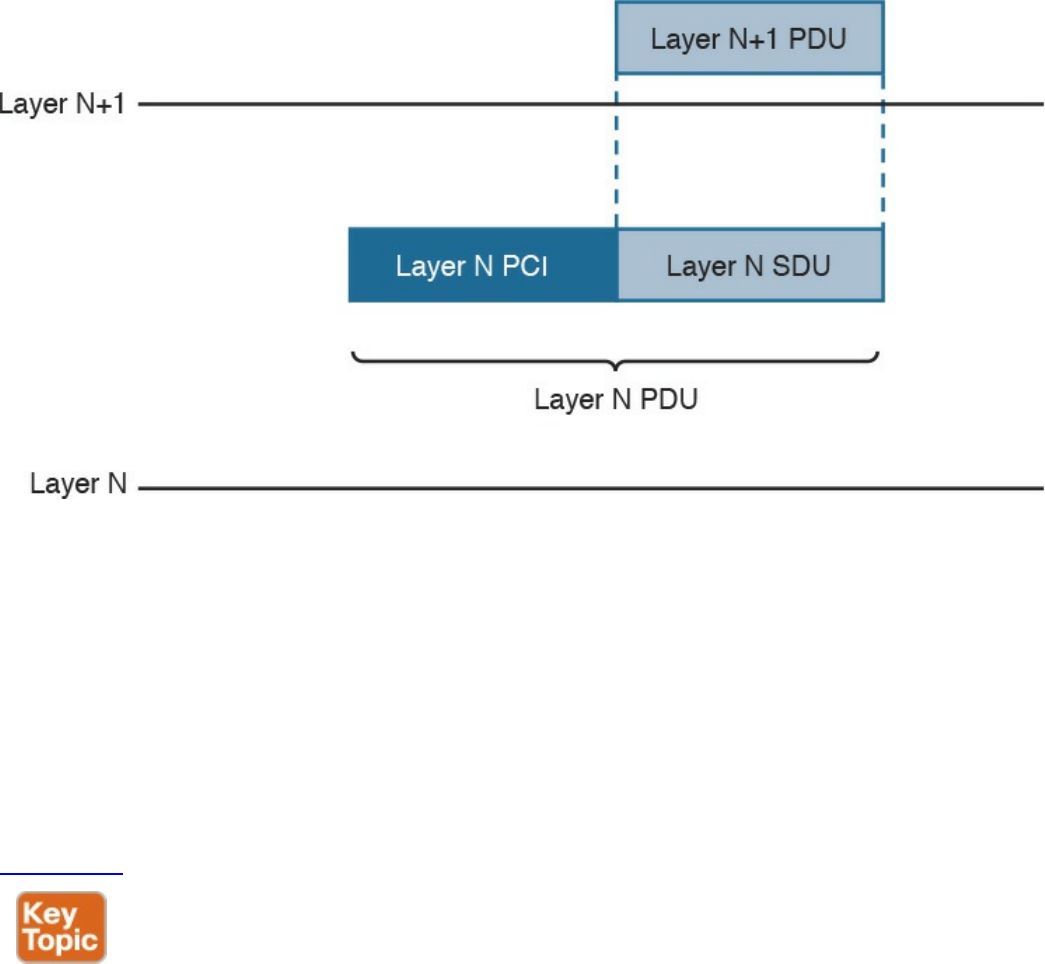

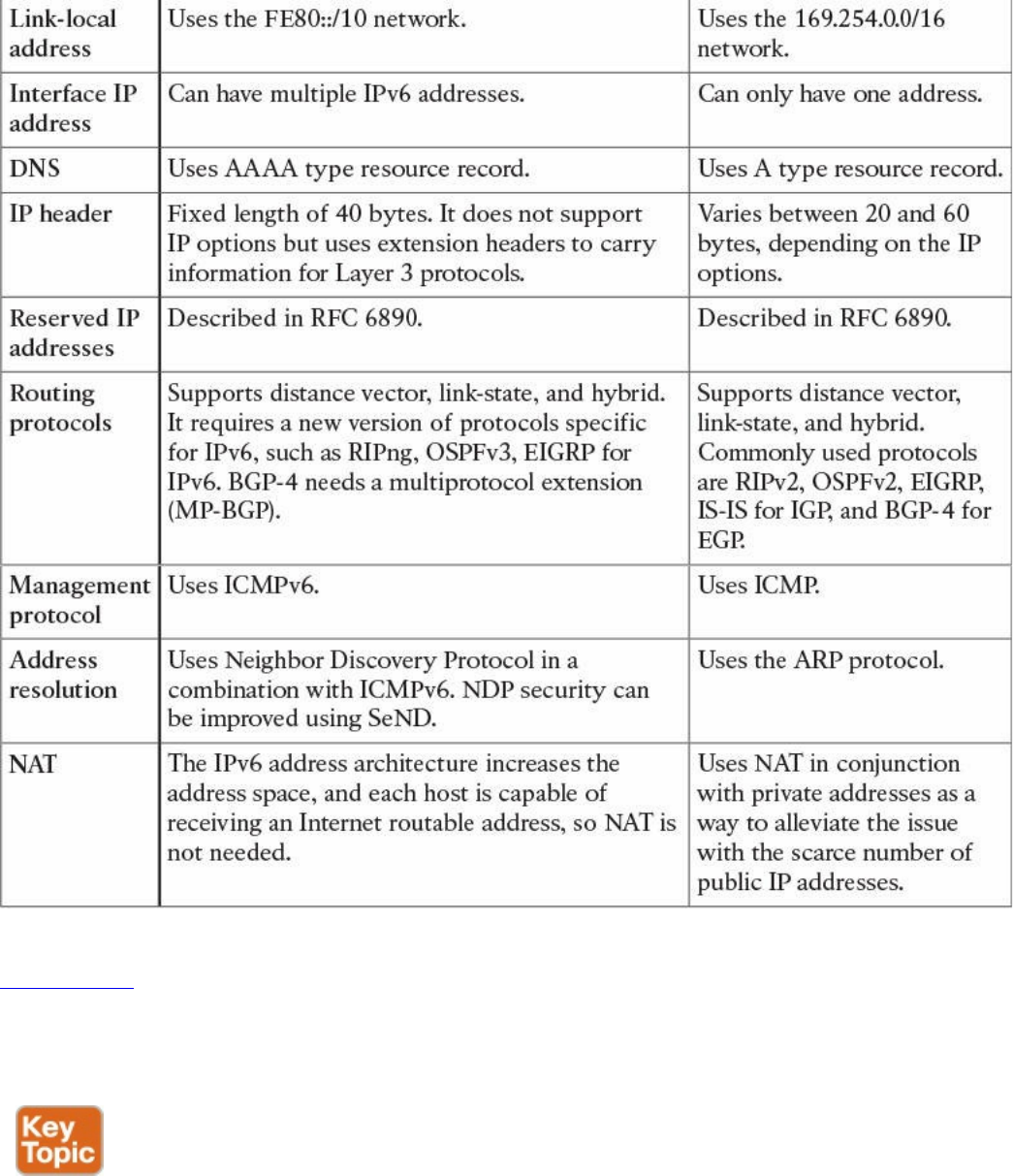

TCP/IP Model Encapsulation

In the TCP/IP model, each layer provides services for the level above it. Protocols at

each layer include a protocol header and in some cases a trailer to the information

provided by the upper layer. The protocol header includes enough information for the

protocol to work toward the delivery of the information. This process is called

encapsulation.

When the information arrives to the destination, the inverse process is used. Each layer

reads the information present in the header of the protocol working at that specific layer,

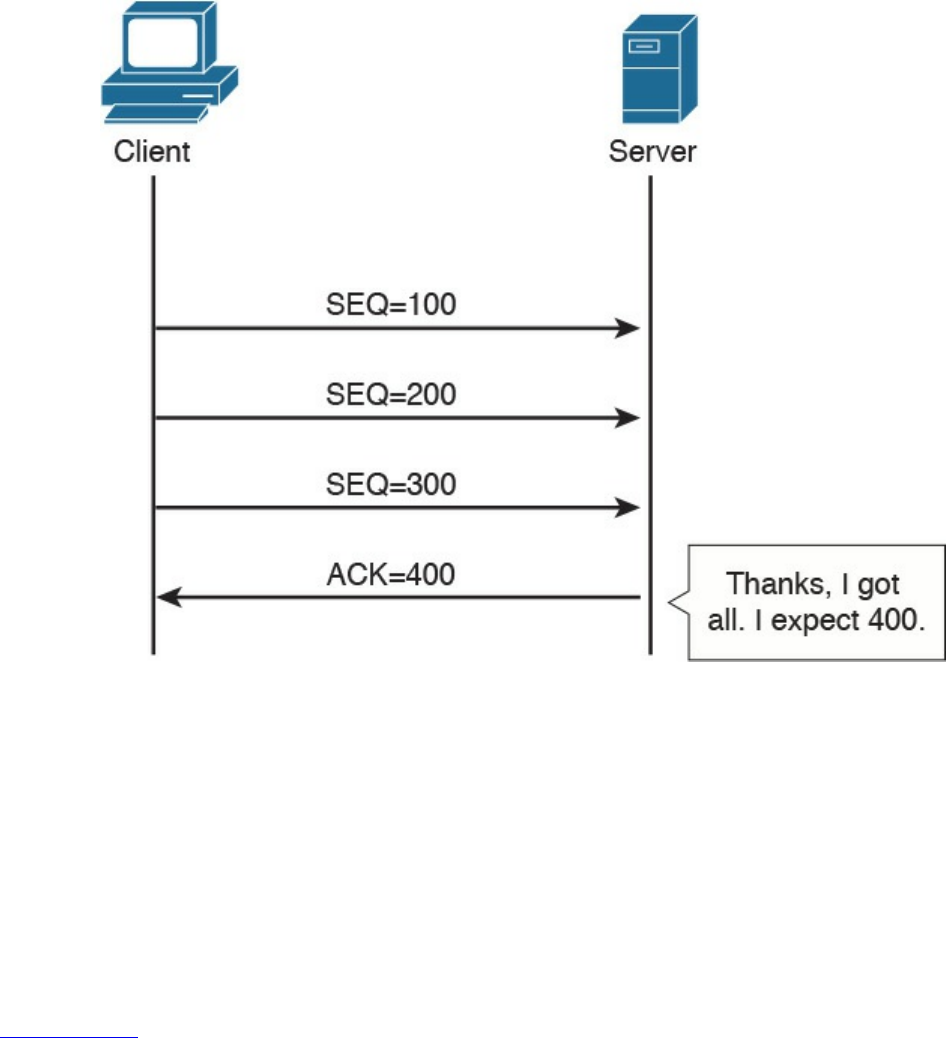

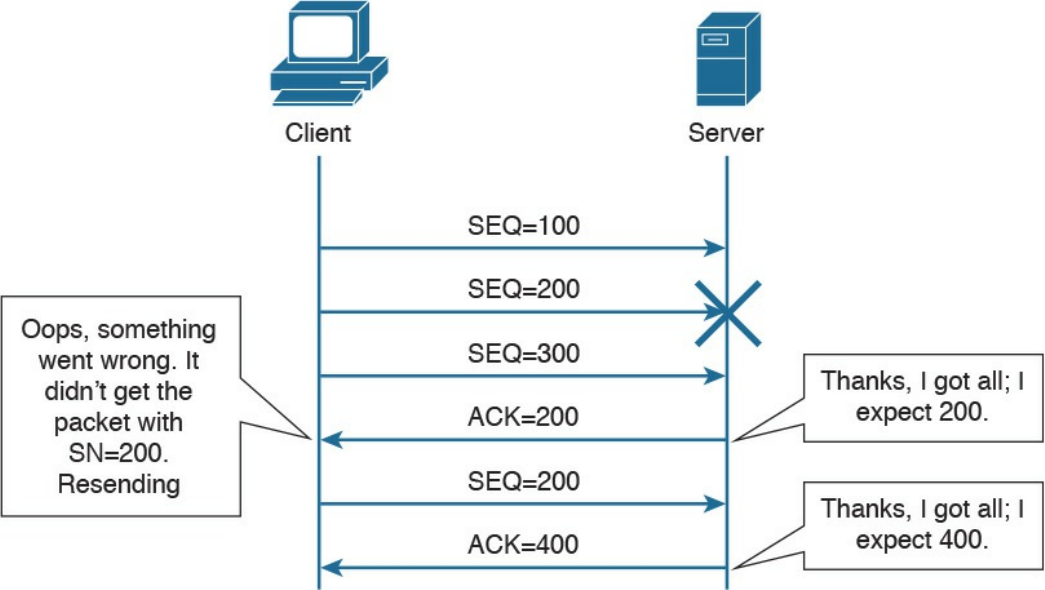

performs an action based on that information, and, if needed, passes the remaining

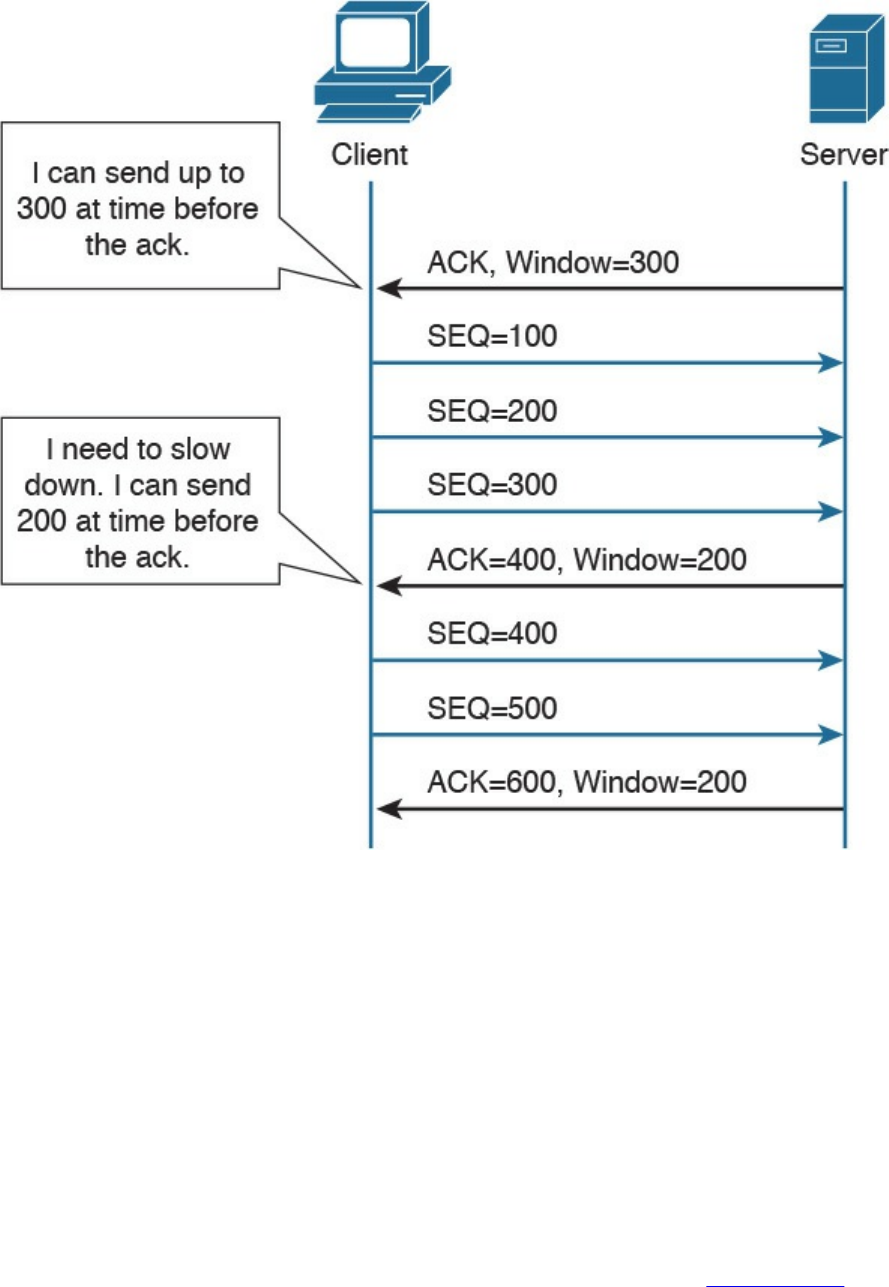

information to the next layer in the stack. This process is called decapsulation.

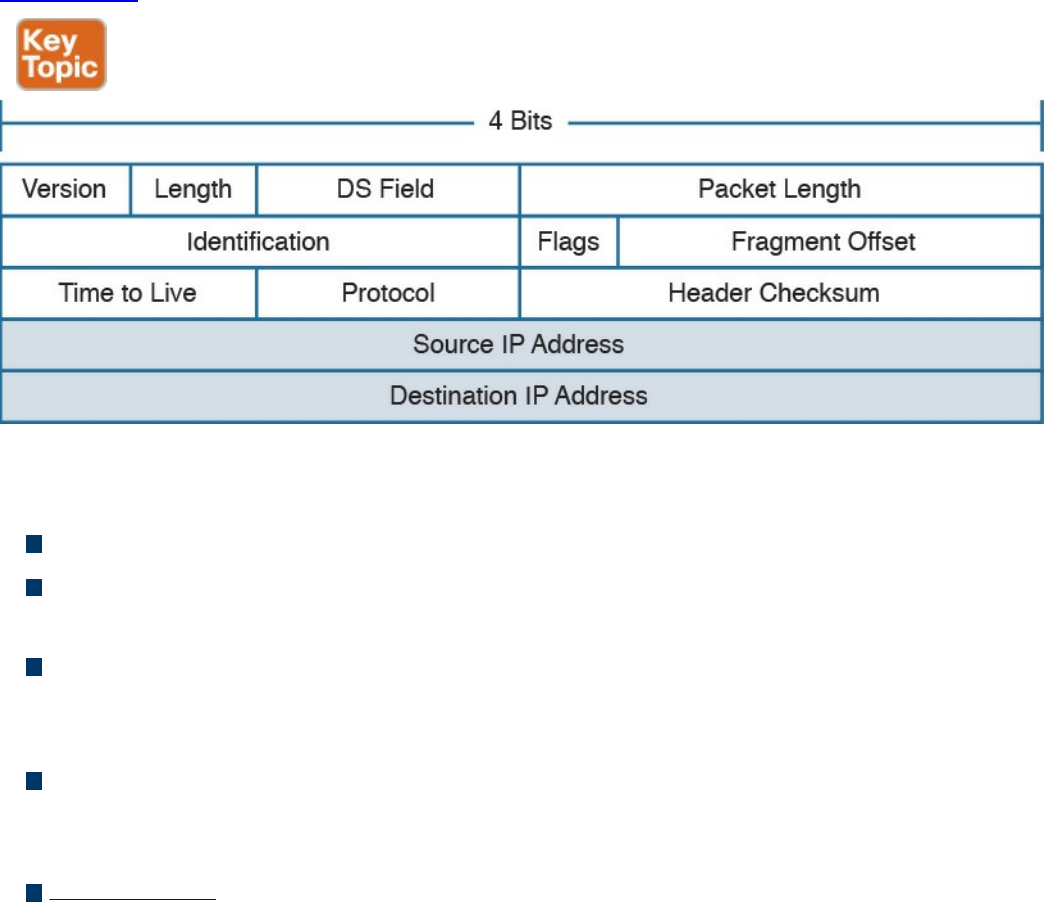

Figure 1-2 shows an example of encapsulation.

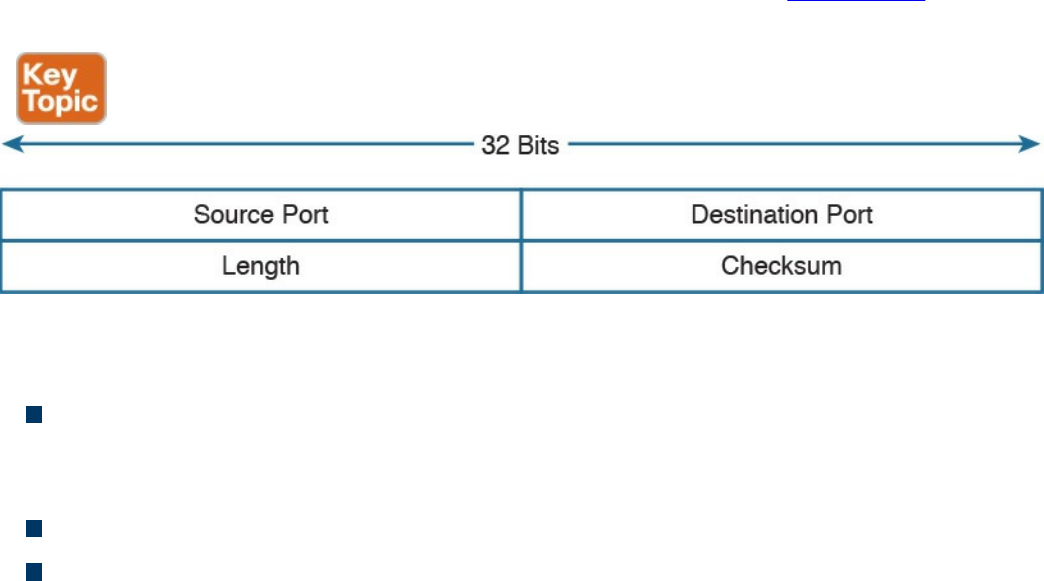

Figure 1-2 Encapsulation

Referring to Figure 1-2, let’s assume that this represents the TCP/IP stack of a host, for

example Host A, trying to request a web page using HTTP. Let’s see how the

www.hellodigi.ir

encapsulation works, step by step:

Step 1. In this example, the host has requested a web page using the HTTP

application layer protocol. The HTTP application generates the information,

represented as HTTP “data” in this example.

Step 2. On the host, the TCP/IP implementation would detect that HTTP uses TCP at

the transport layer and will send the HTTP data to the transport layer for further

handling. The protocol at the transport layer, TCP, will create a TCP header,

which includes information such as the service port (TCP port 80 for a web

page request), and will send it to the next layer, the Internet layer, for further

processing. The TCP header plus the payload forms a TCP segment.

Step 3. The Internet layer receives the TCP information, attaches an IP header, and

encapsulates it in an IP packet. The IP header will contain information to handle

the packet at the Internet layer. This includes, for example, the IP addresses of

the source and destination.

Step 4. The IP packet is then passed to the link layer for further processing. The

TCP/IP stack detects that it needs to use Ethernet to transmit the frame to the

next device. It will add an Ethernet header and trailer and transmit the frame to

the physical network interface card (NIC), which will take care of the physical

transmission of the frame.

When the information arrives to the destination, the receiving host will start from the

bottom of the TCP/IP stack by receiving an Ethernet frame. The link layer of the

destination host will read and process the header and trailer, and then pass the IP packet

to the Internet layer for further processing.

The same process happens at the Internet layer, and the TCP segment is passed to the

transport layer, which will again process the TCP header information and pass the

HTTP data for final processing to the HTTP application.

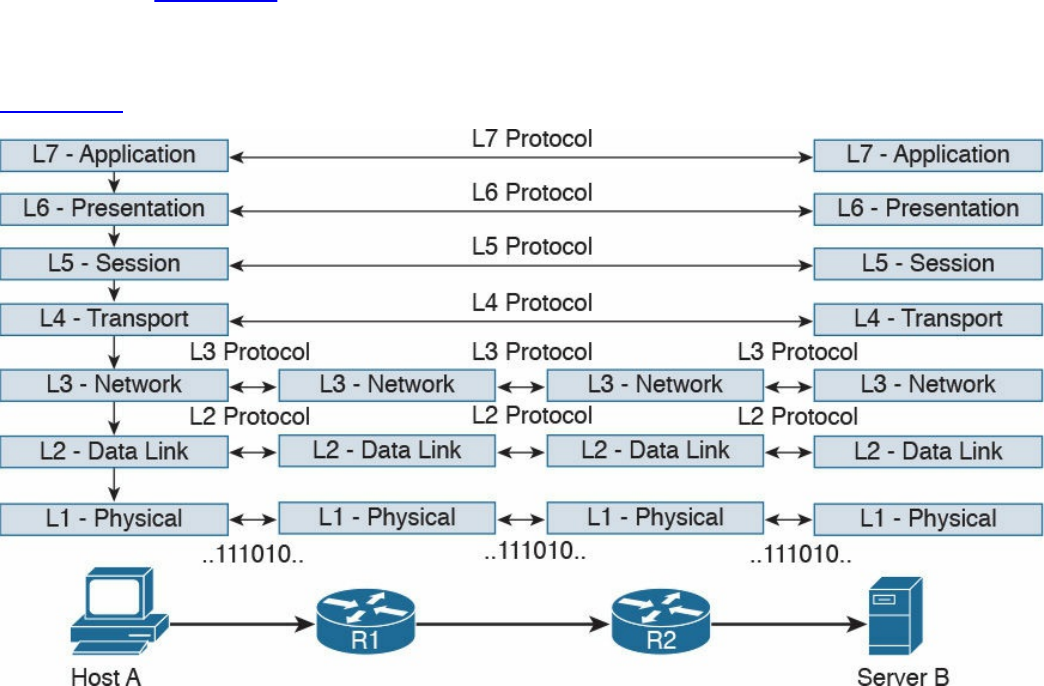

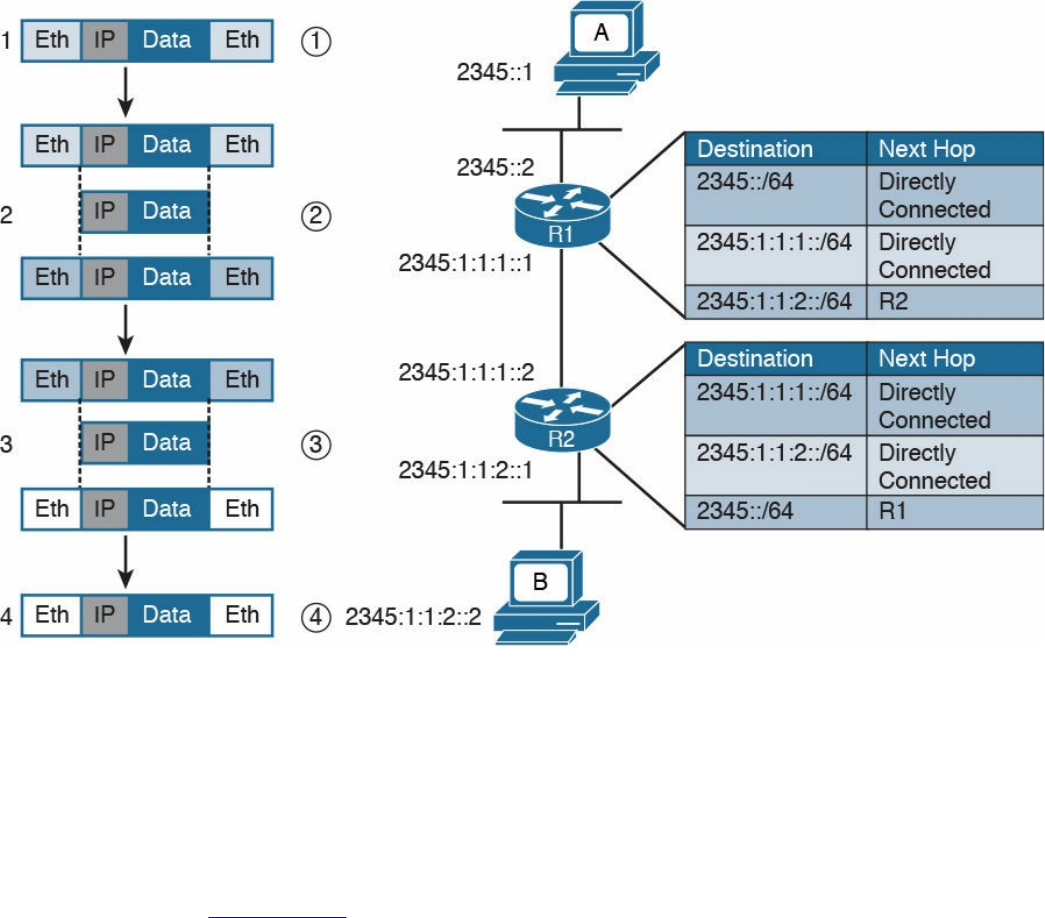

Networking Communication with the TCP/IP Model

Let’s look back at the example of browsing a web page and see how the TCP/IP model

is used to transmit and receive information through a networking connection path.

A networking device is a device that implements the TCP/IP model. The model may be

fully implemented (for example, in the case of a user computer or a server) or partially

implemented (for example, a router might implement the TCP/IP stack only up to the

Internet layer).

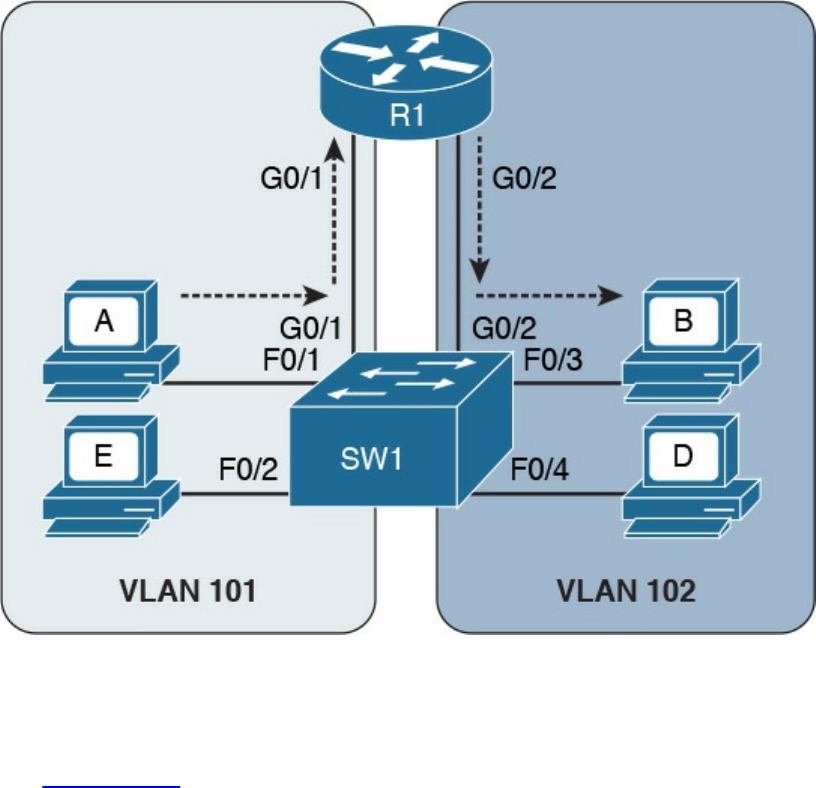

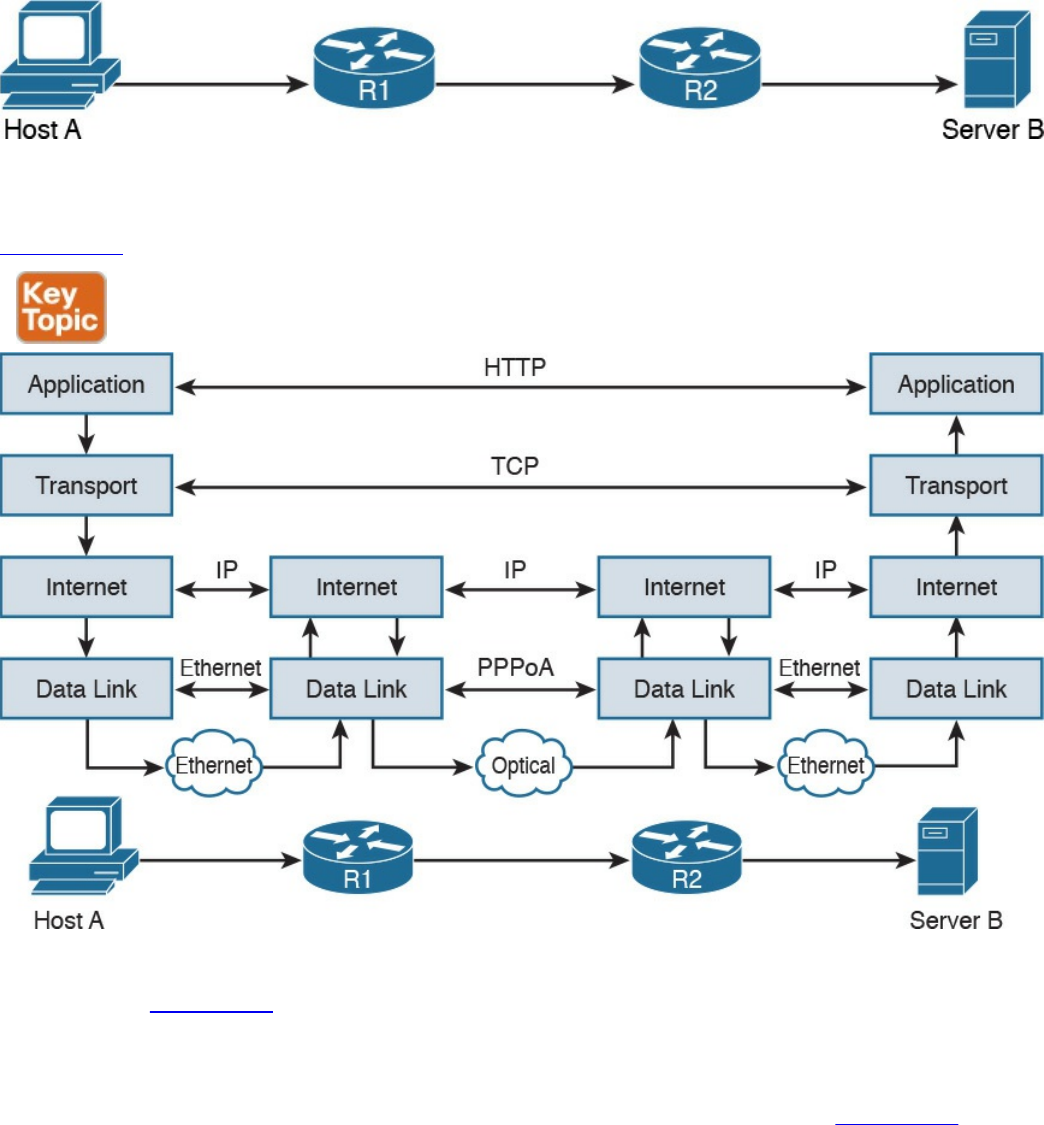

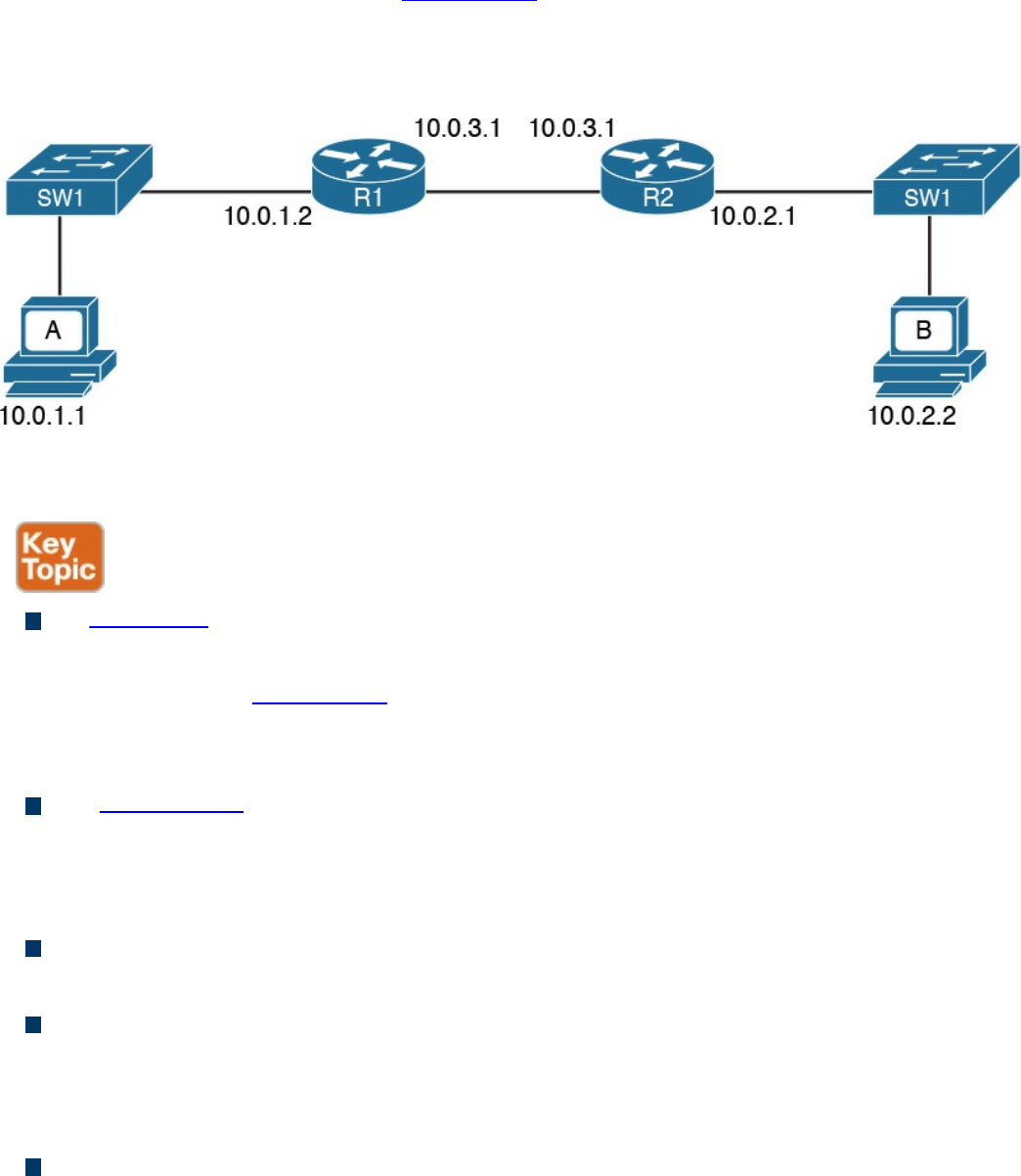

Figure 1-3 shows the logical topology. It includes two hosts: Host A, which is

requesting a web page, and Server B, which is the destination of the request. The

network connectivity is provided by two routers: R1 and R2, which are connected via

an optical link. The host and server are directly connected to R1 and R2, respectively,

www.hellodigi.ir

with a physical cable.

Figure 1-3 Logical Topology Demonstrating Networking Communication with

TCP/IP Model

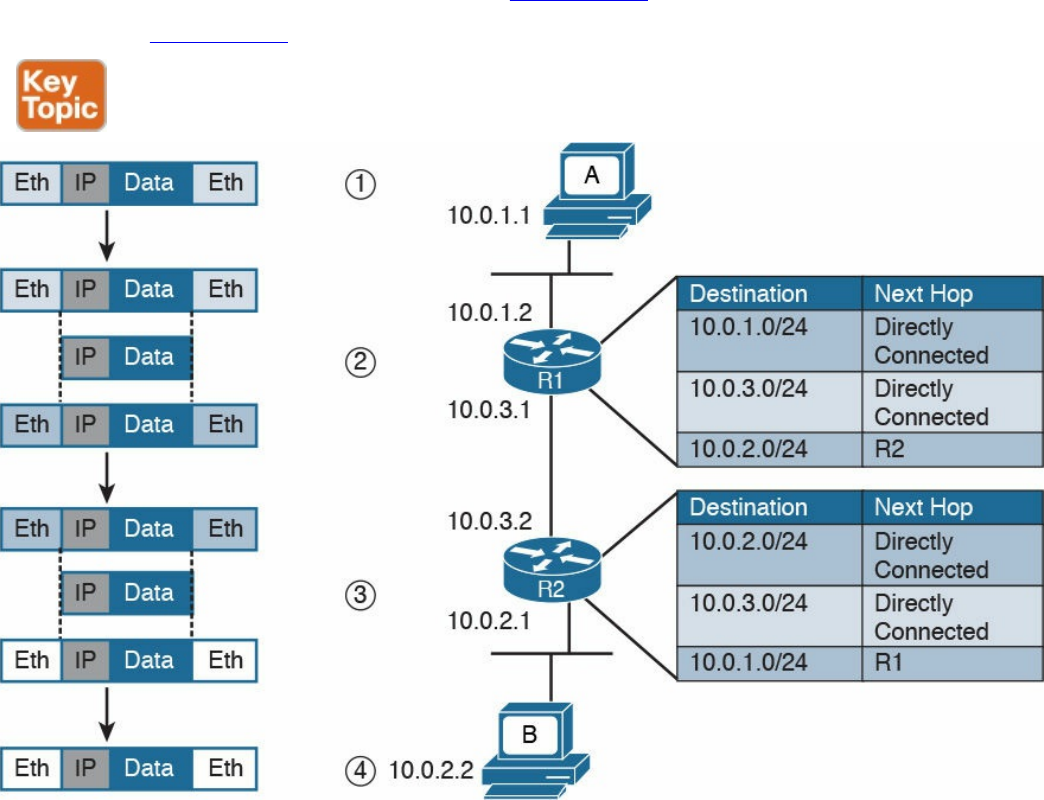

Figure 1-4 shows how each TCP/IP model layer interacts in this case.

Figure 1-4 Interaction of the TCP/IP Model Layers

Referring to Figure 1-4, let’s see how the steps are executed:

Step 1. The HTTP application on Host A will create an HTTP Application message

that includes an HTTP header and the contents of the request in the payload.

This will be encapsulated up to the link layer, as described in Figure 1-2, and

transmitted over the cable to R1.

Step 2. The R1 link layer will receive the frame, extract the IP packet, and send it to

the IP layer. Because the main function of the router is to forward the IP packet,

it will not further decapsulate the packet. It will use the information in the IP

header to forward the packet to the best next router, R2. To do that, it will

encapsulate the IP packet in a new link layer frame—for example, Point-to-

www.hellodigi.ir

Point over ATM (PPPoA)—and send the frame on the physical link toward R2.

Step 3. R2 will follow the same process that R1 followed in step 2 and will send the

IP packet encapsulated in a new Ethernet frame to Host B.

Step 4. Server B’s link layer will decapsulate the frame and send it to the Internet

layer.

Step 5. The Internet layer detects that the packet is destined to Server B itself by

looking into the IP header information (more specifically the value of the

destination IP address). It strips the IP header and passes the TCP segment to

the transport layer.

Step 6. The transport layer uses the port information included in the TCP header to

determine to which application to pass the data (in this case, the web service

application).

Step 7. The application layer, the web service, finally receives the request and may

decide to respond (for example, by providing the web page to Host A). The

process will start again, with the web service creating some data and passing it

to the HTTP application layer protocol for handling.

The example in Figure 1-4 is very simplistic. For example, TCP requires a connection

to be established before transmitting data. However, it is important that the main idea

behind the TCP/IP model is clear as a basis for understanding how the various

protocols work.

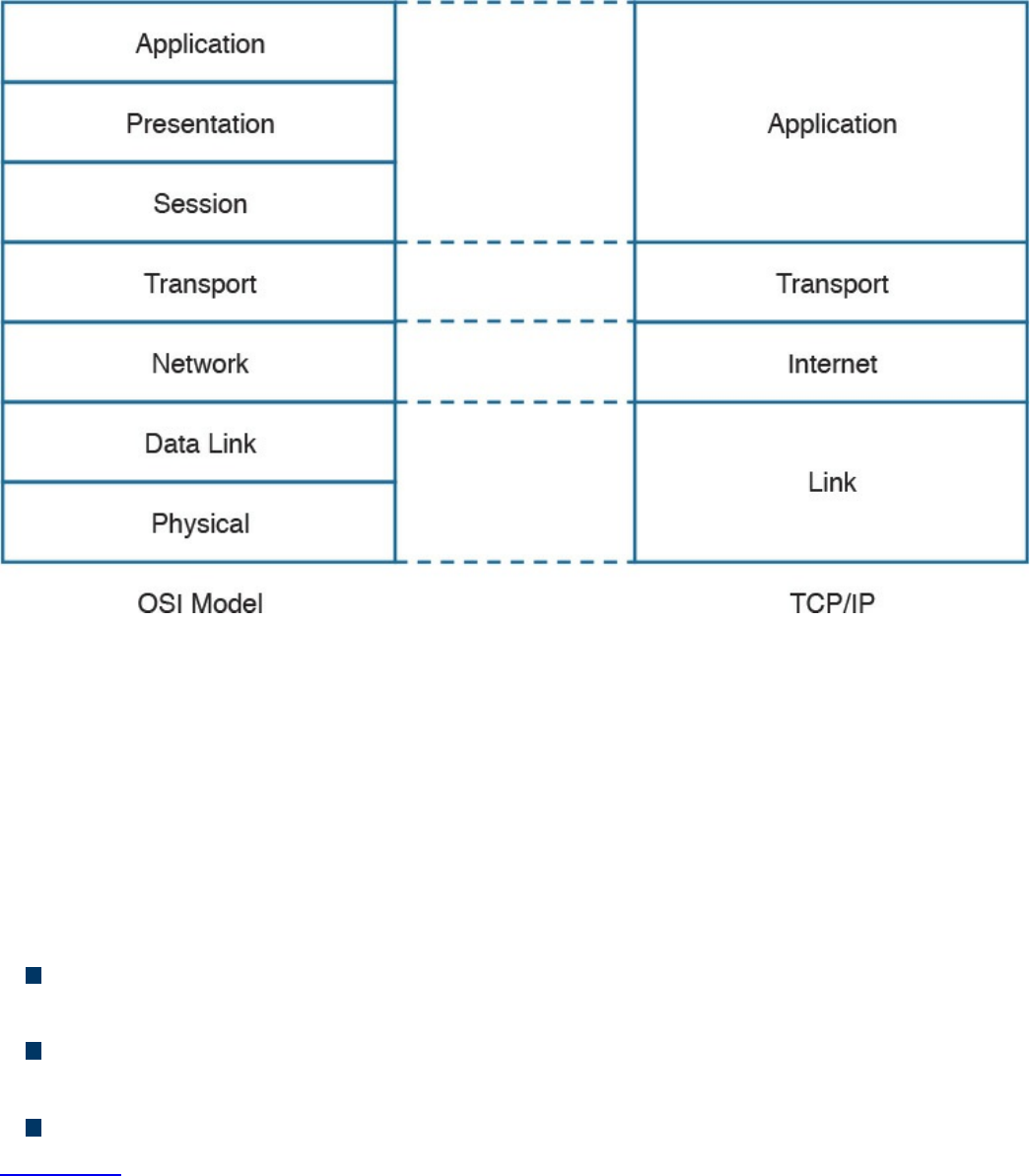

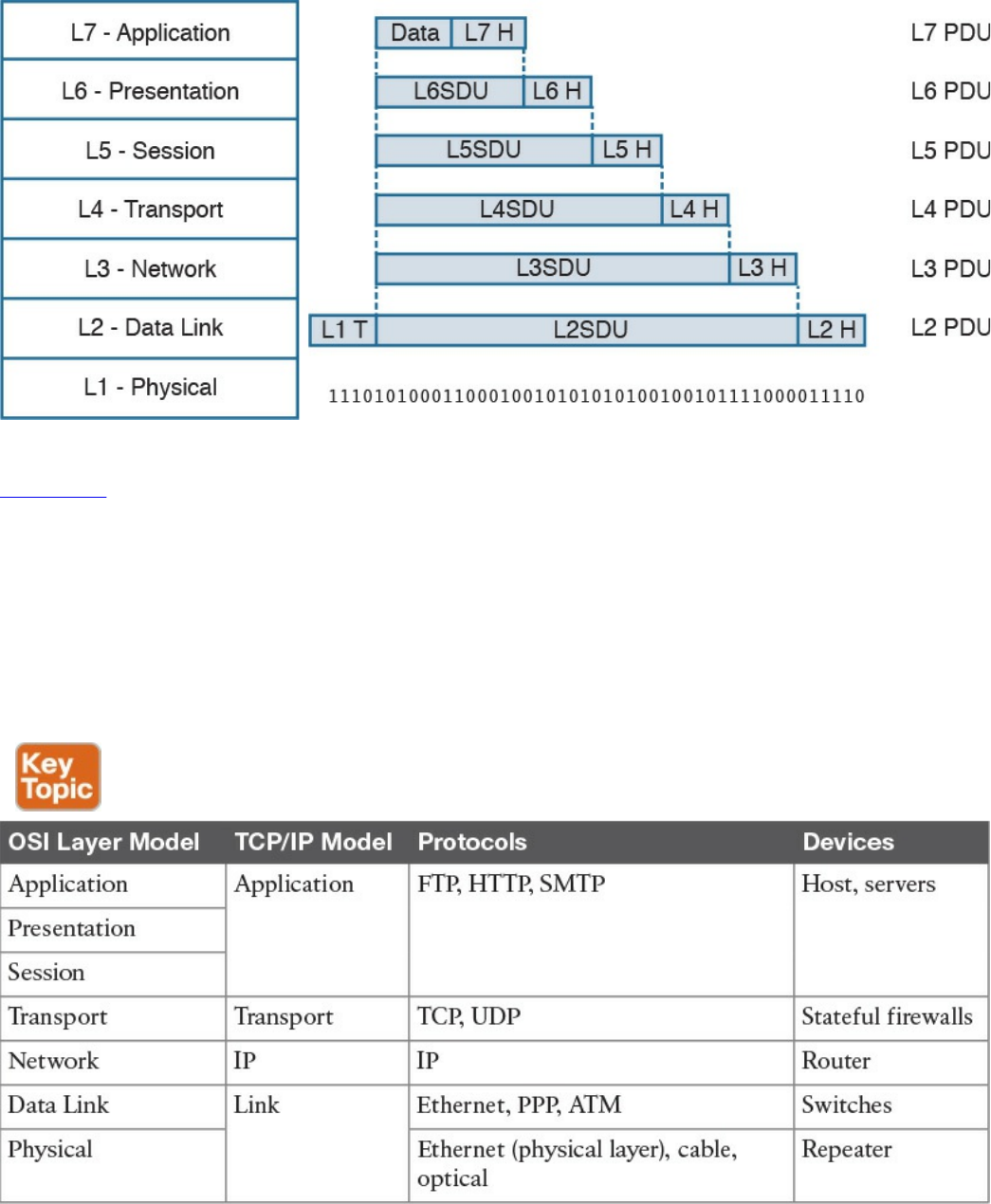

Open System Interconnection Model

The Open System Interconnection (OSI) reference model is another model that uses

abstraction layers to represent the operation of communication systems. The idea behind

the design of the OSI model is to be comprehensive enough to take into account

advancement in network communications and to be general enough to allow several

existing models for communication systems to transition to the OSI model.

The OSI model presents several similarities with the TCP/IP model described in the

previous section. One of the most important similarities is the use of abstraction layers.

As with TCP/IP, each layer provides service for the layer above it within the same

computing device, while it interacts at the same layer with other computing devices.

The OSI model includes seven abstract layers, each representing a different function and

service within a communication network:

Physical layer—Layer 1 (L1): Provides services for the transmission of bits over

the data link.

www.hellodigi.ir

Data link layer—Layer 2 (L2): Includes protocols and functions to transmit

information over a link between two connected devices. For example, it provides

flow control and L1 error detection.

Network layer—Layer 3 (L3): This layer includes the function necessary to

transmit information across a network and provides abstraction on the underlying

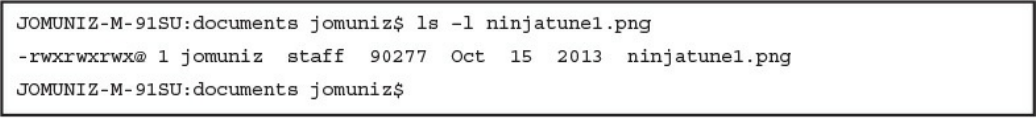

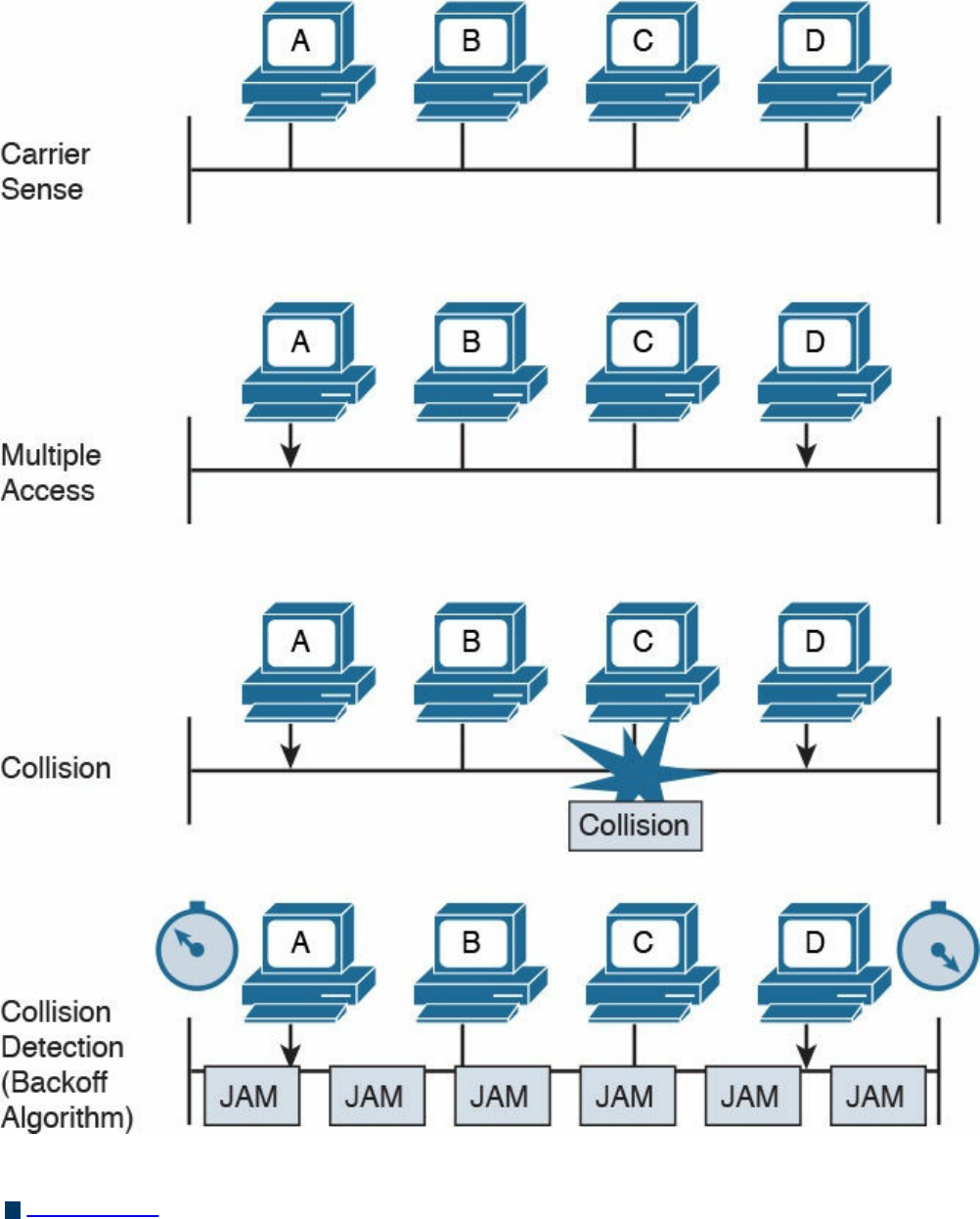

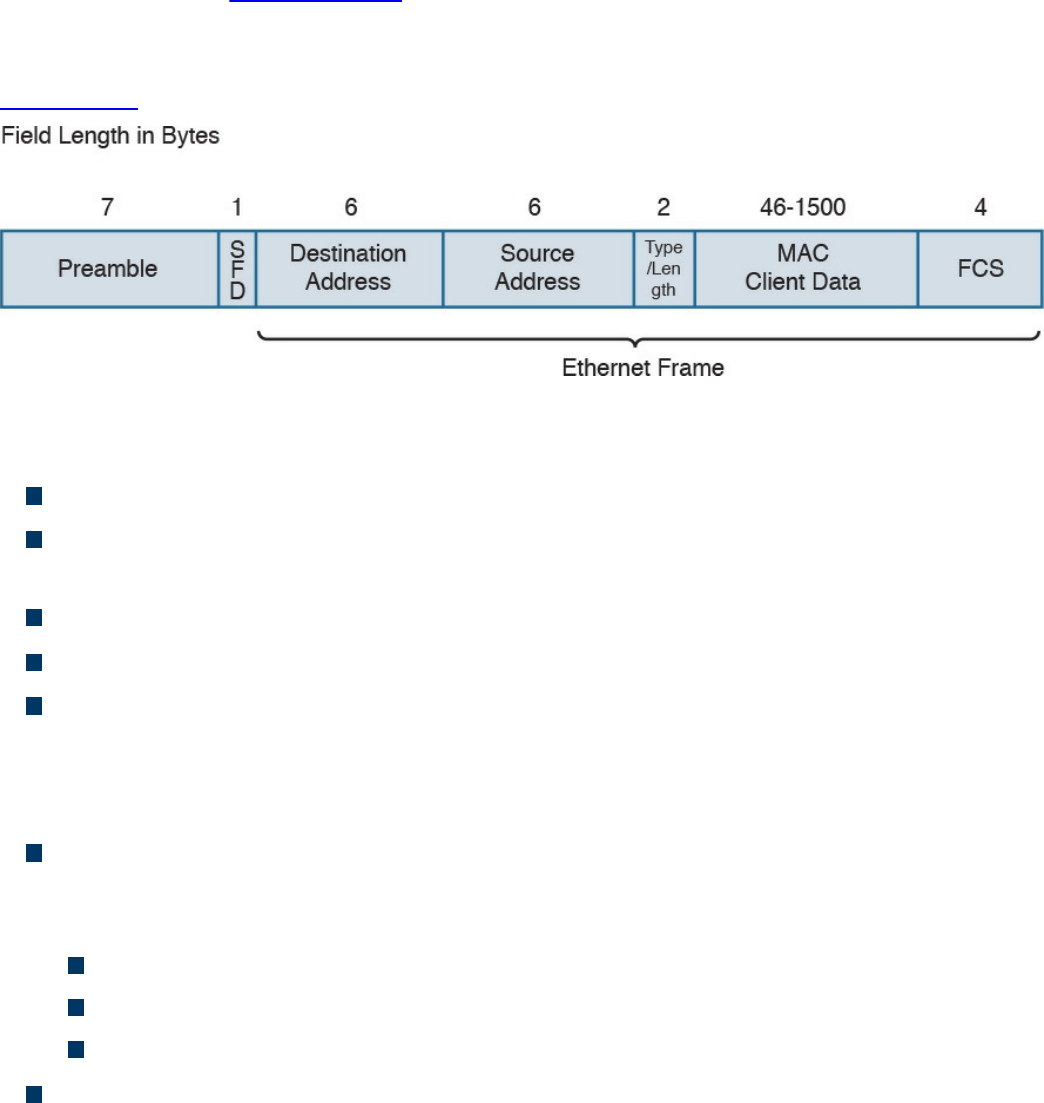

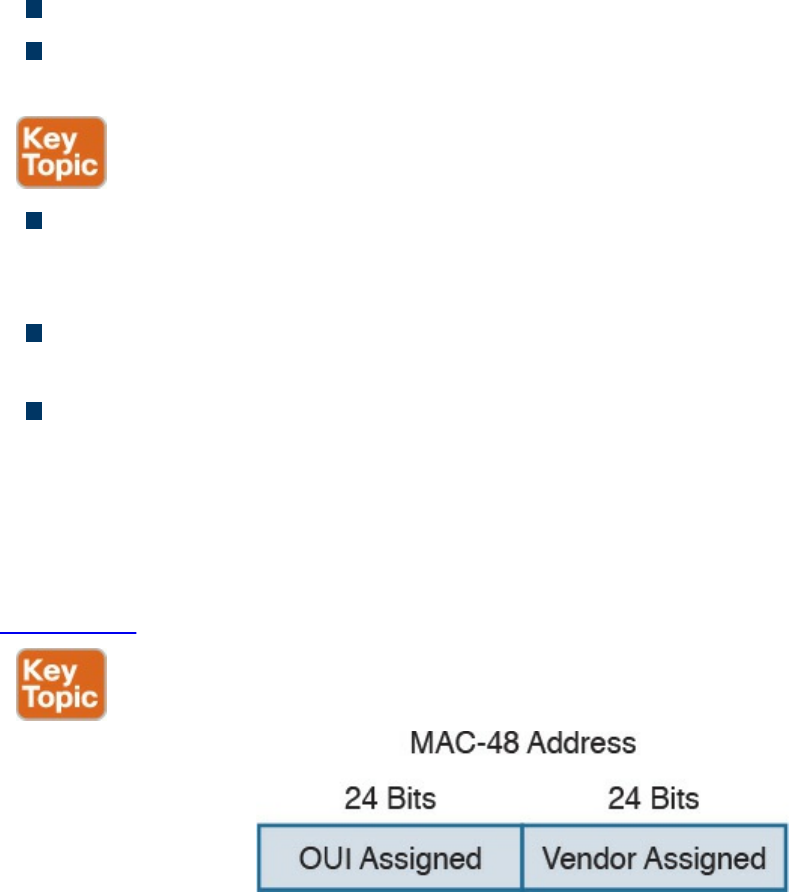

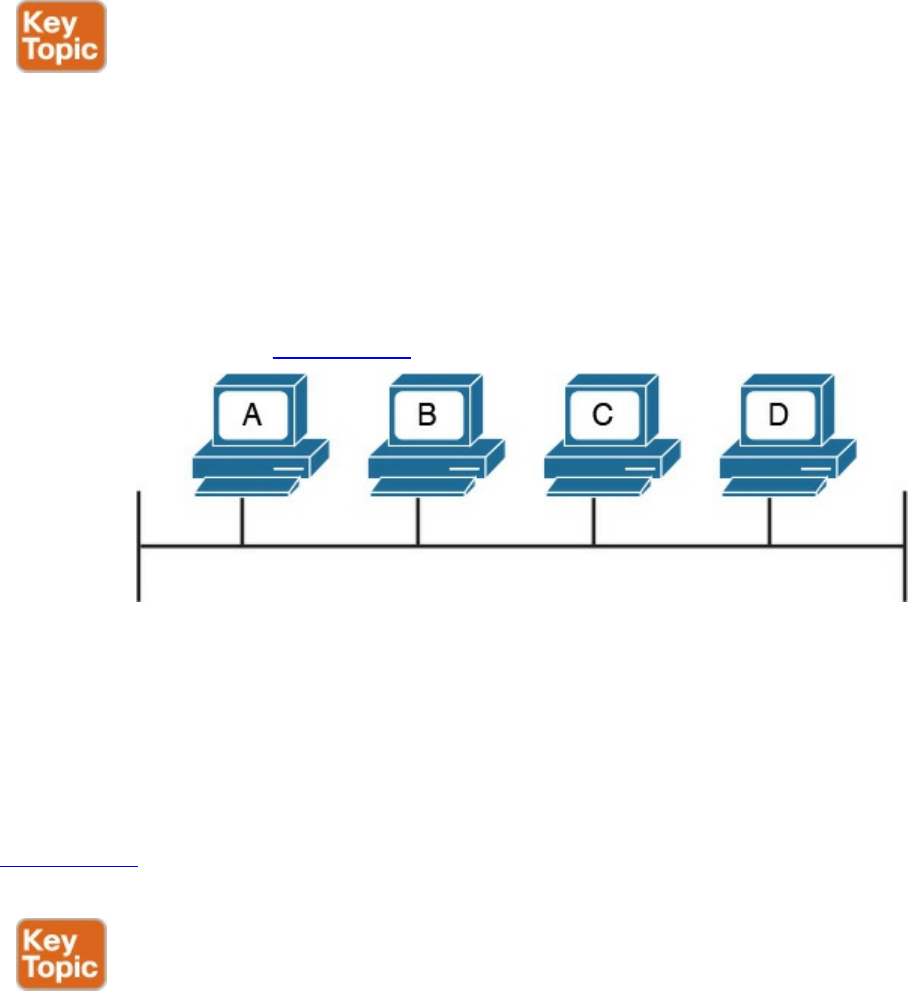

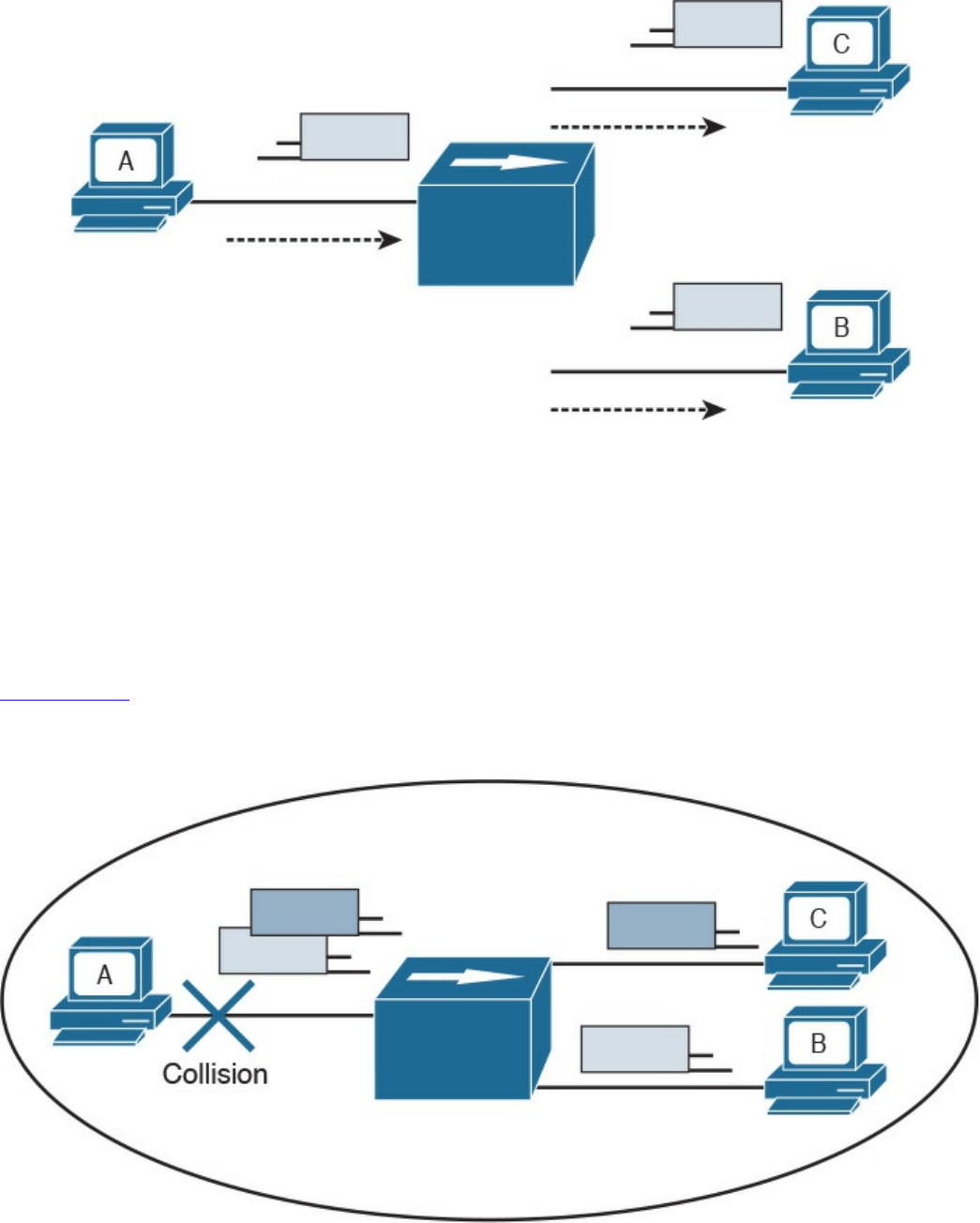

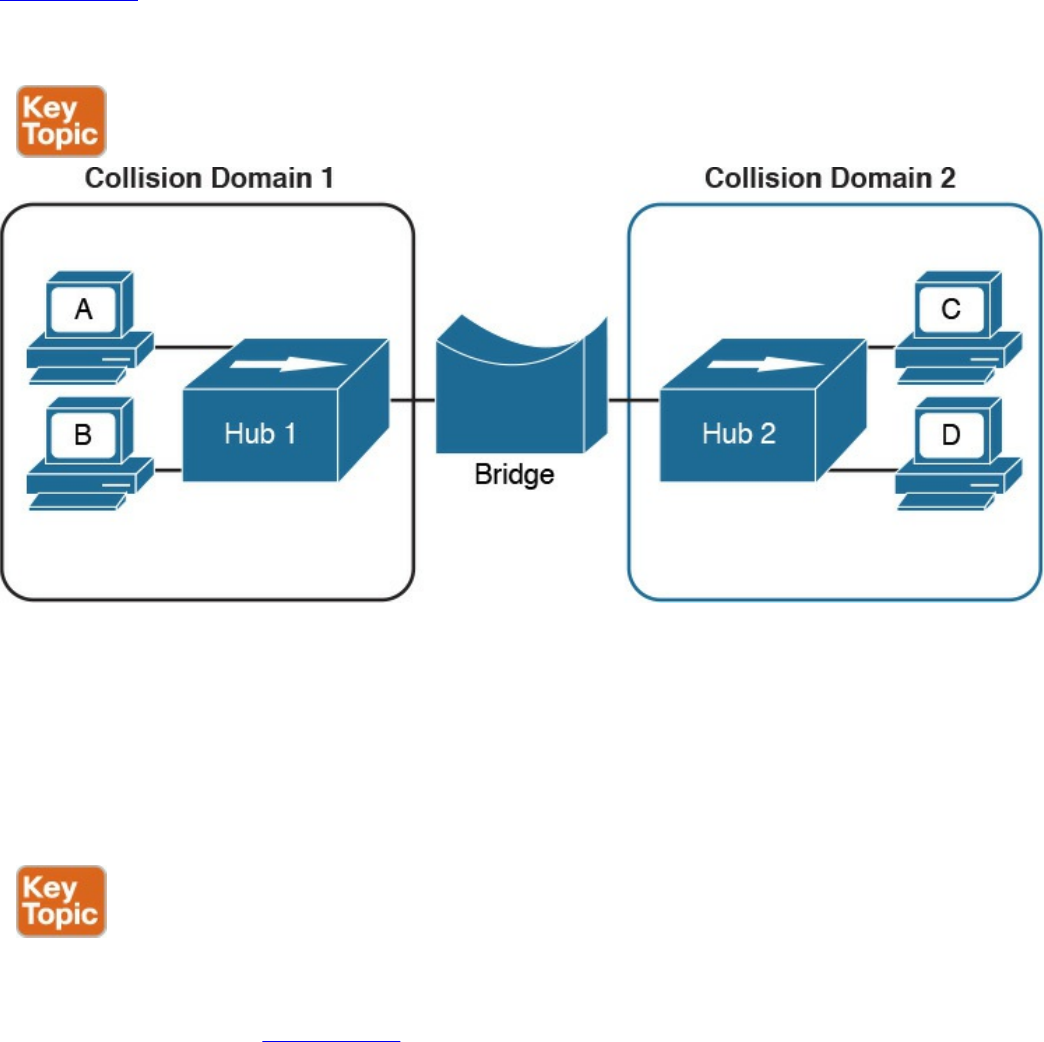

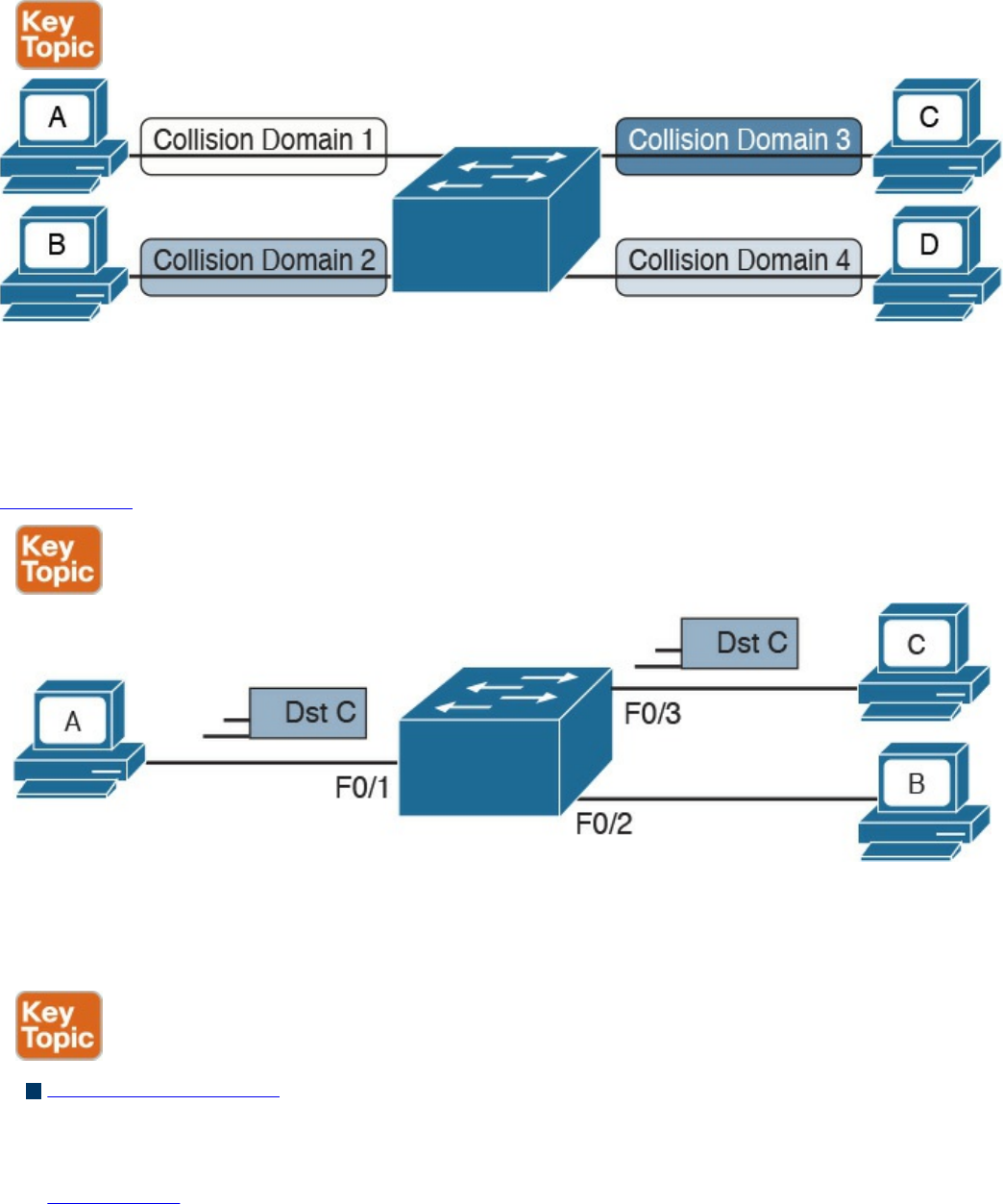

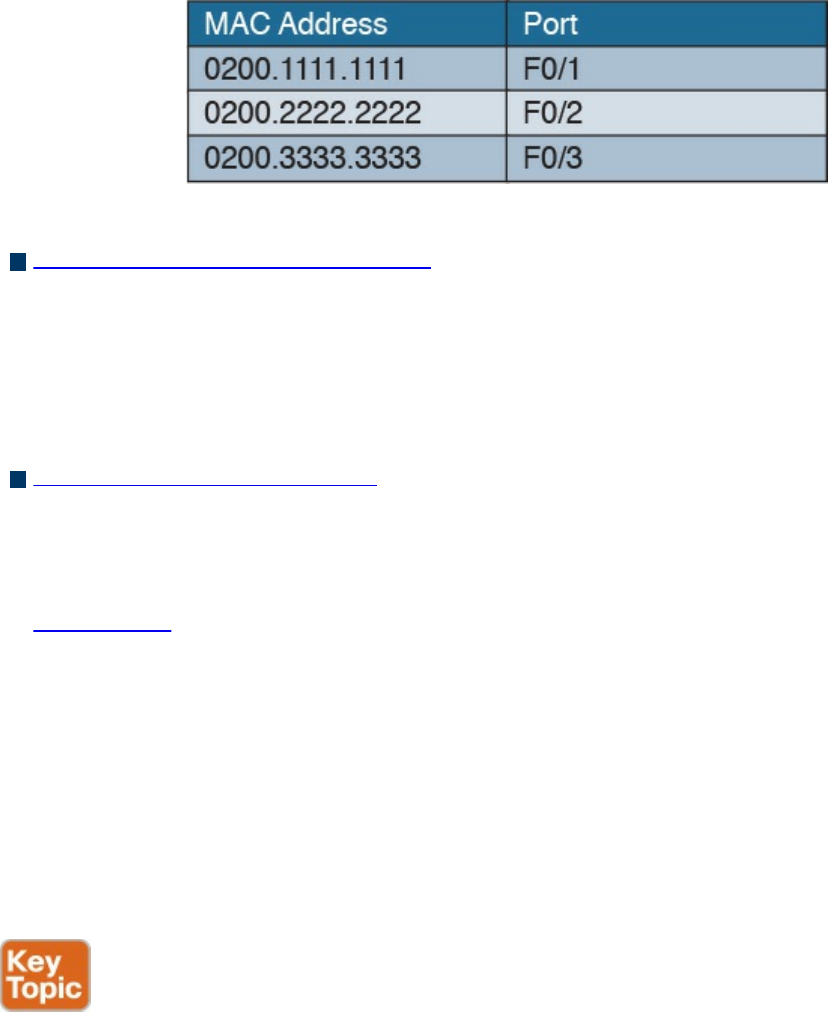

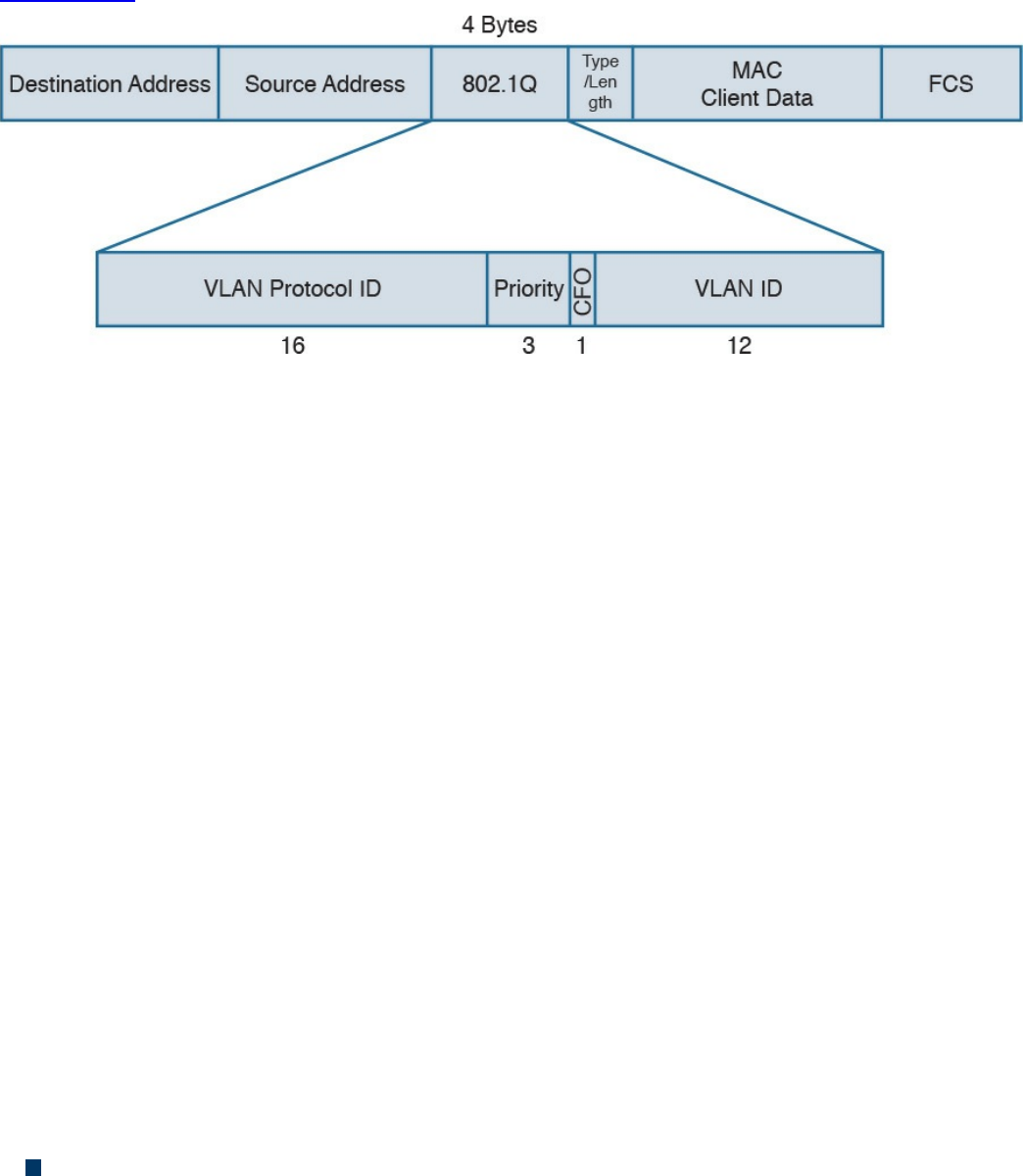

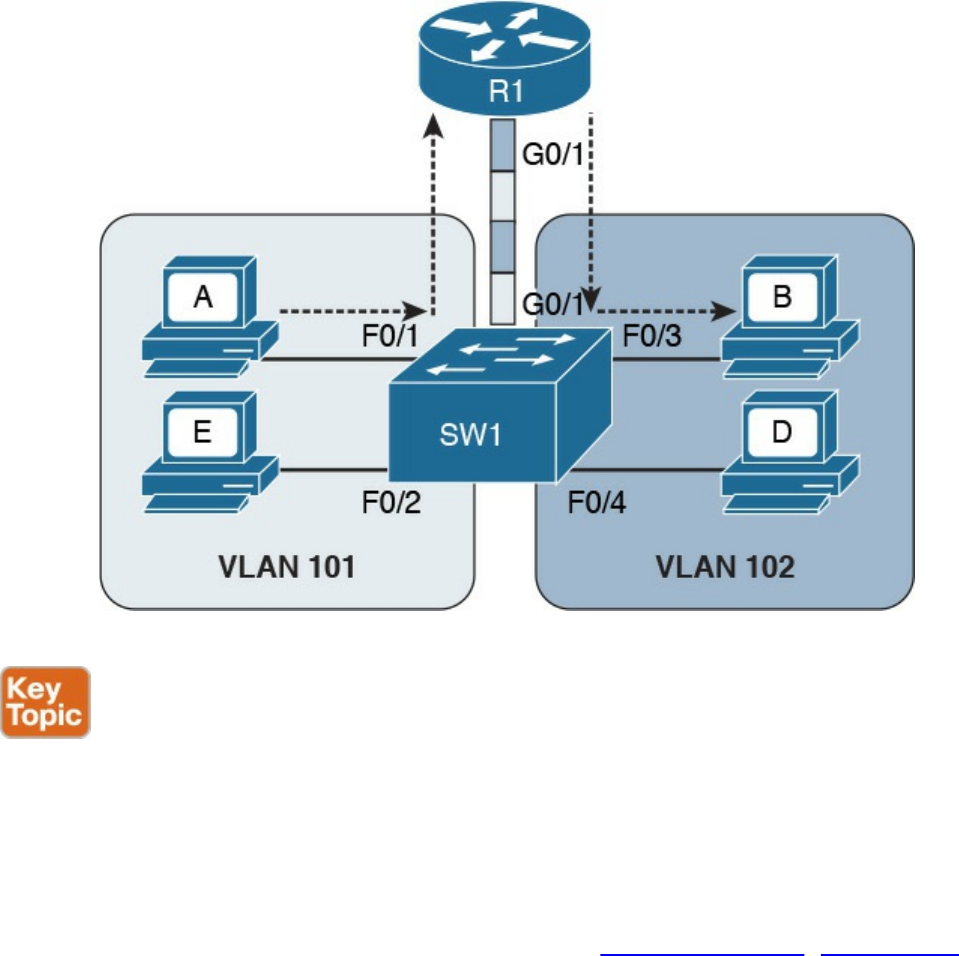

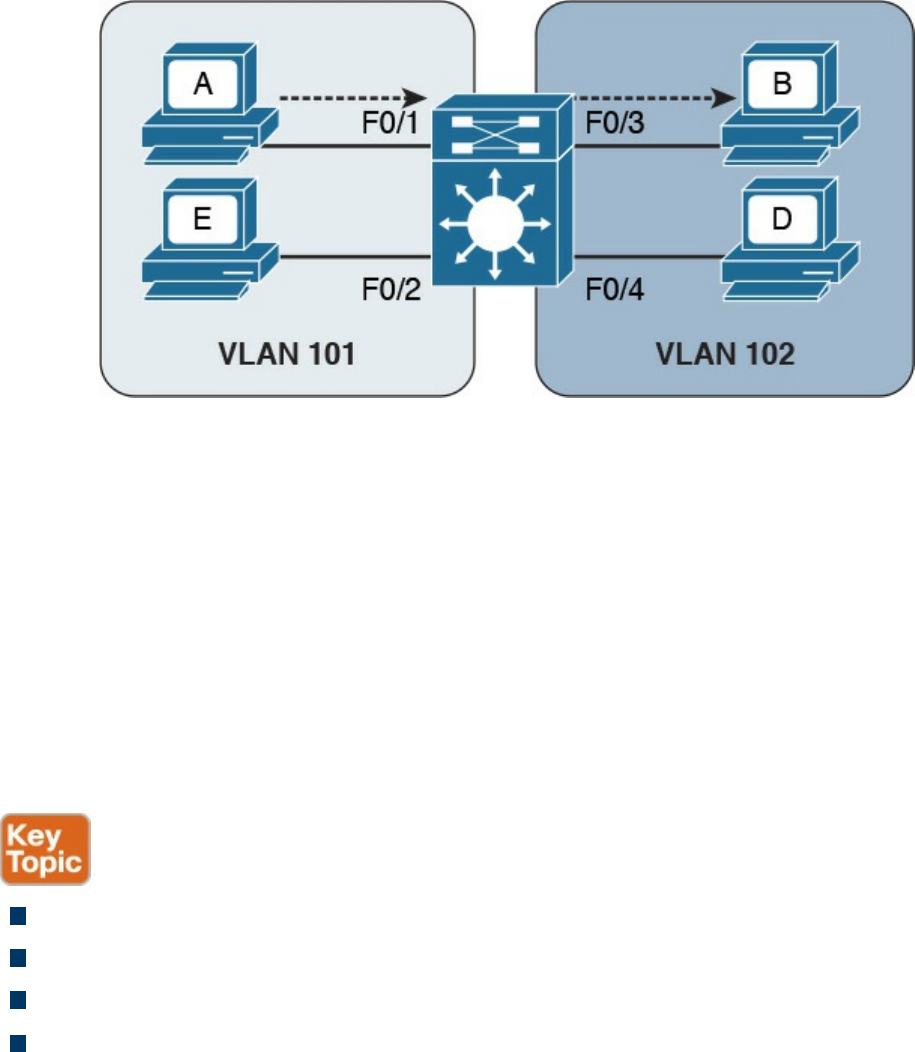

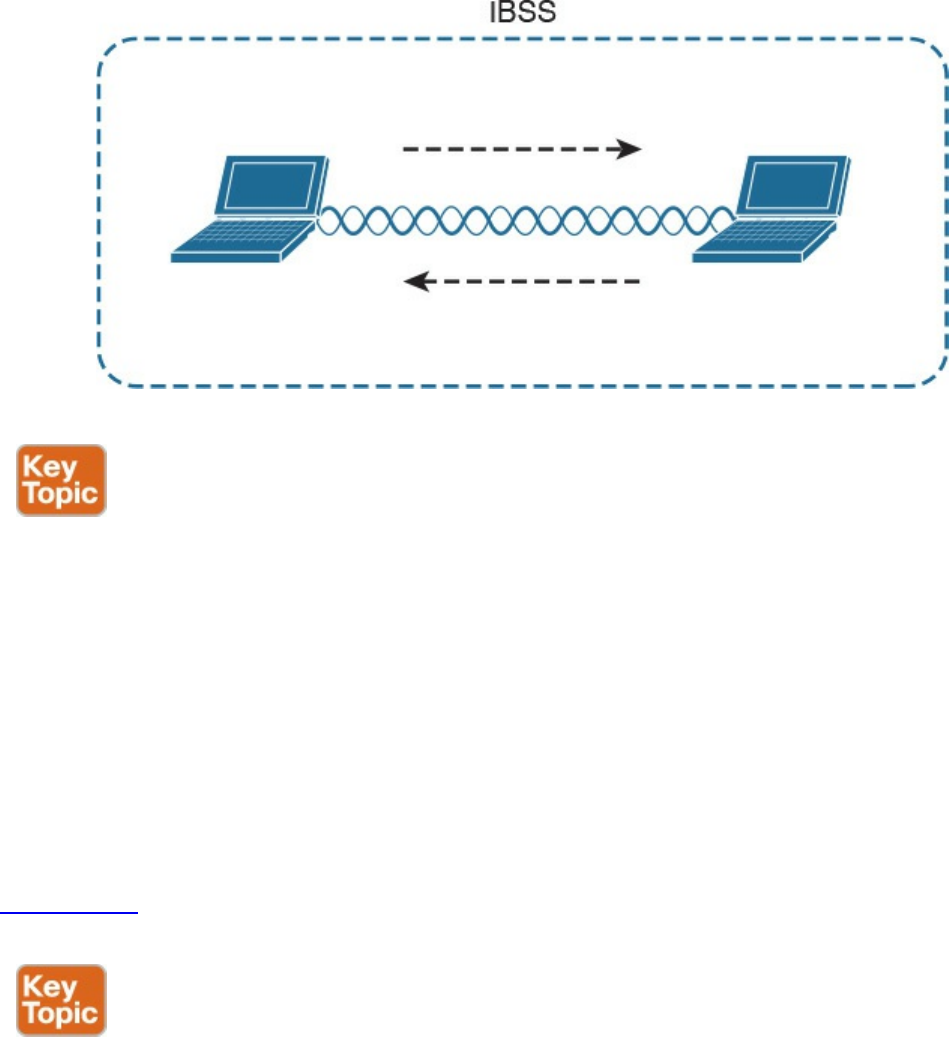

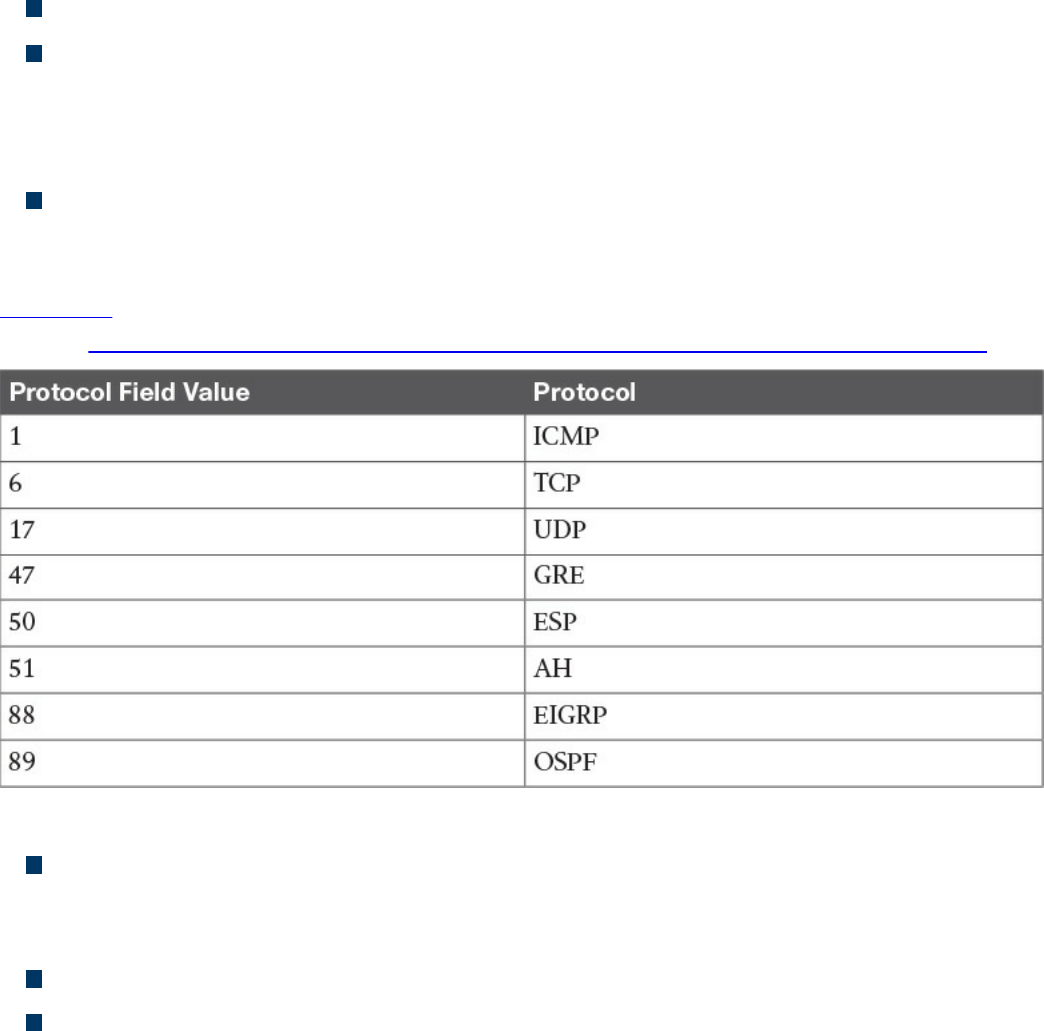

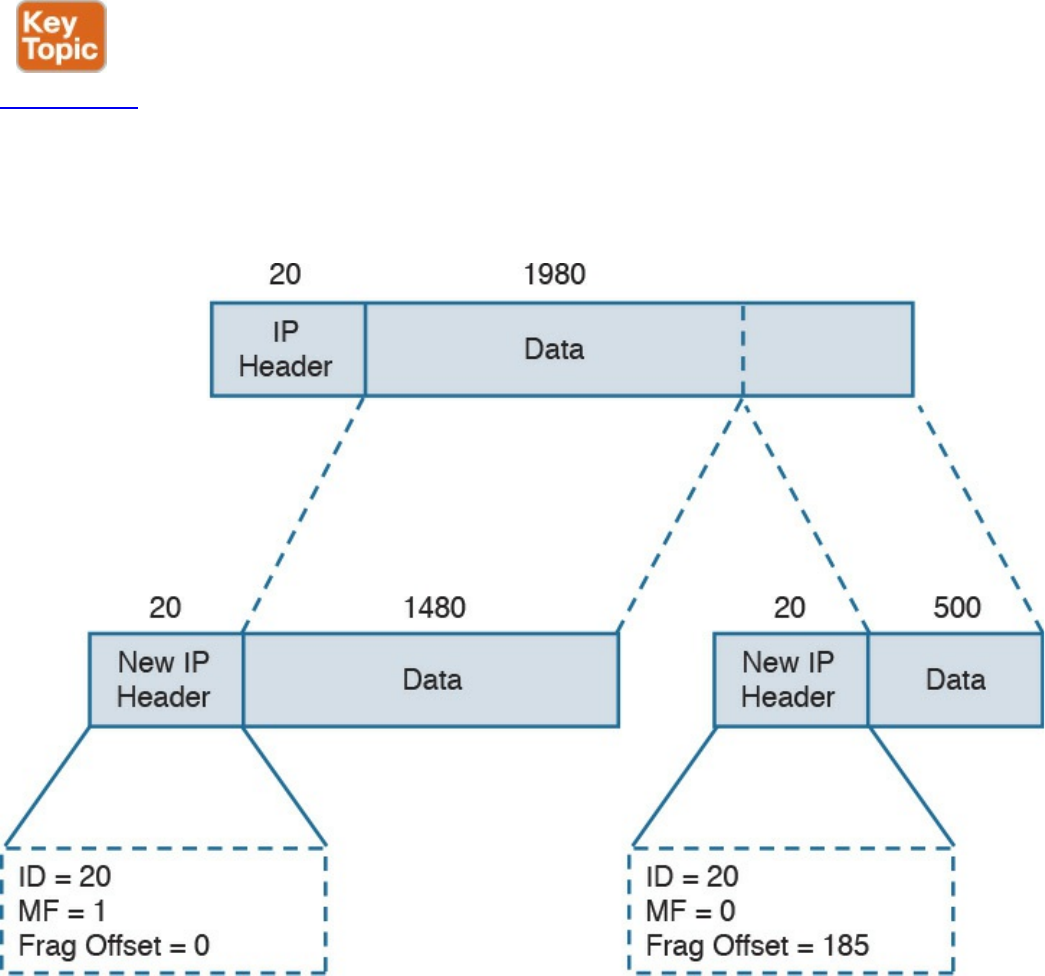

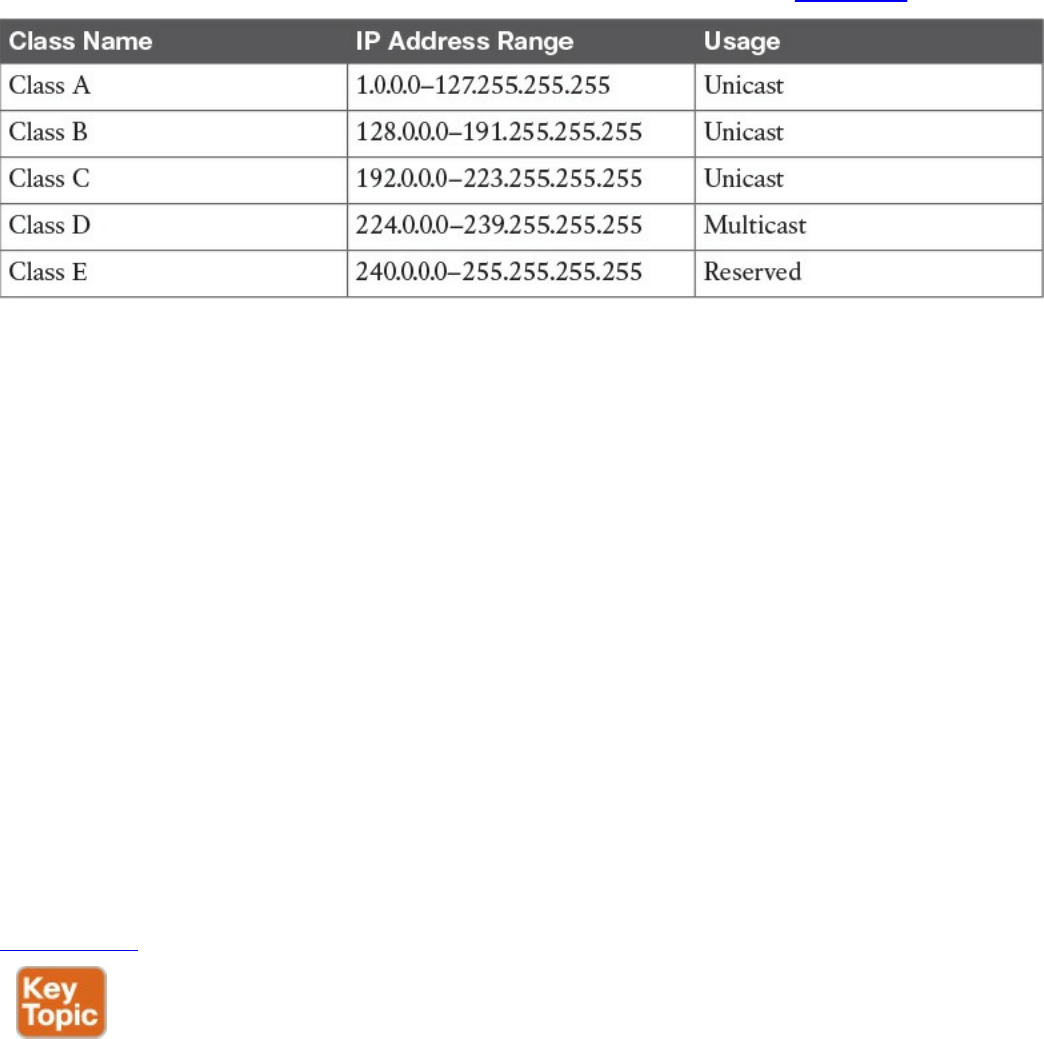

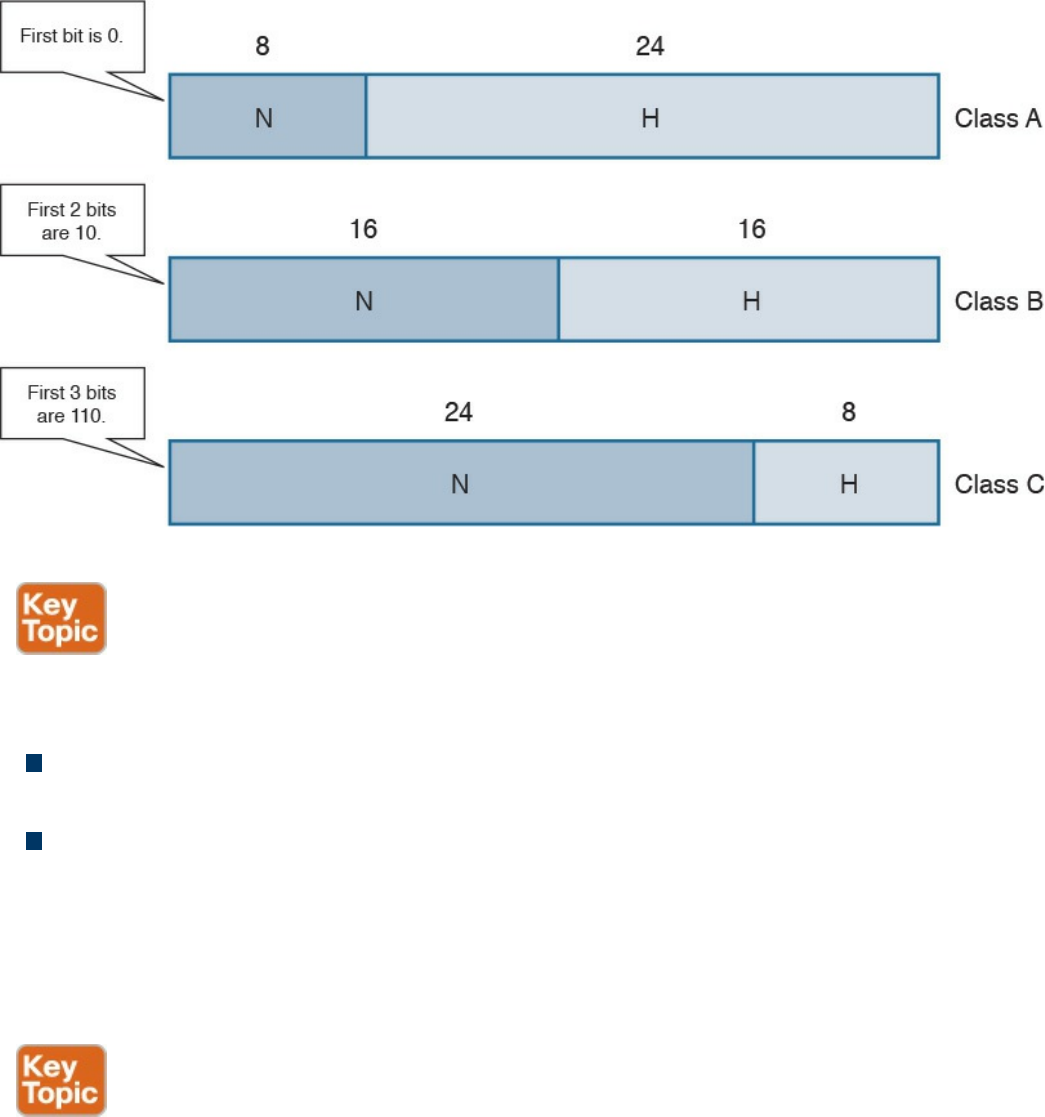

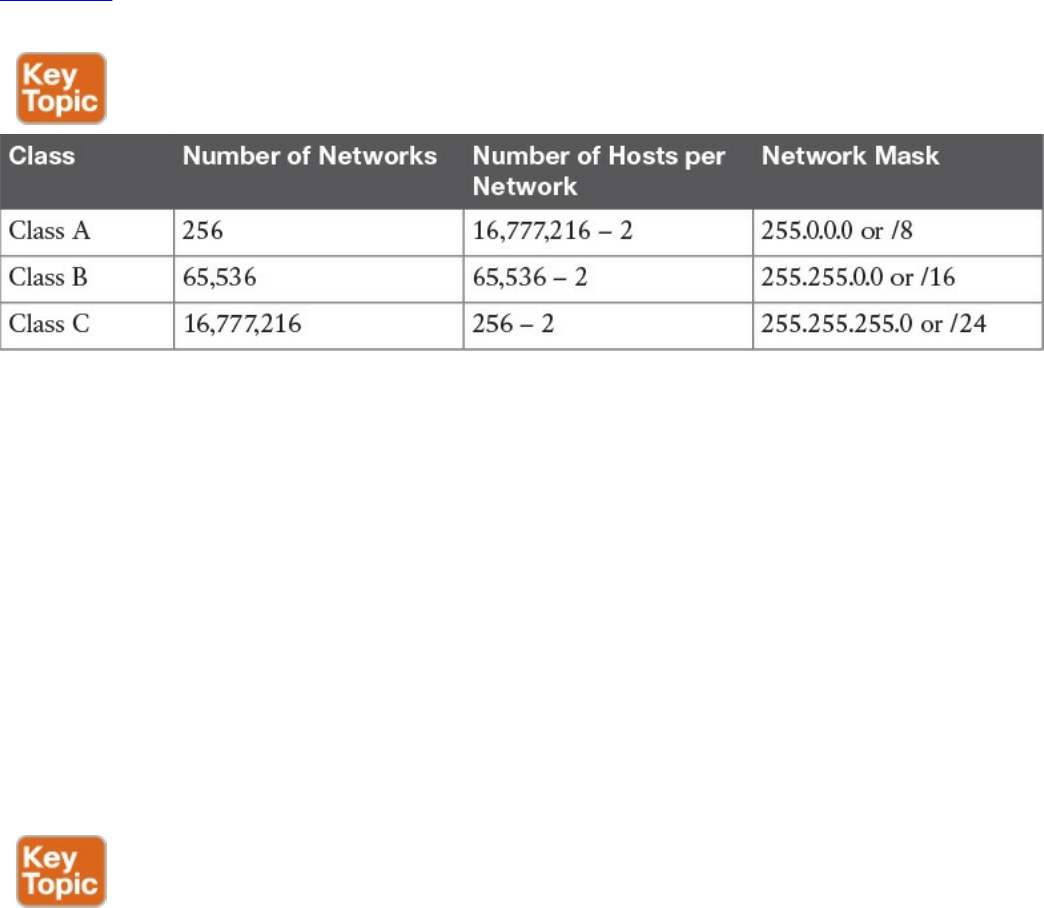

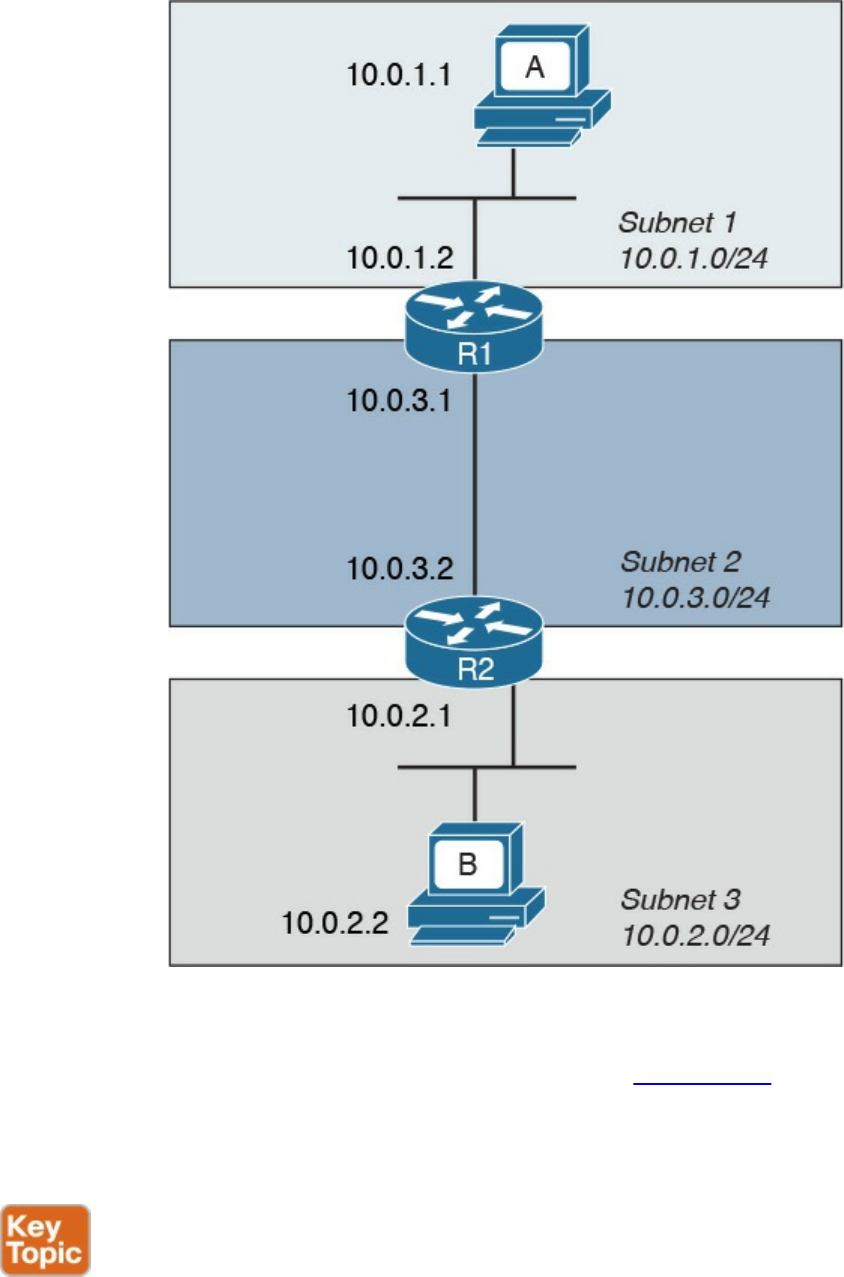

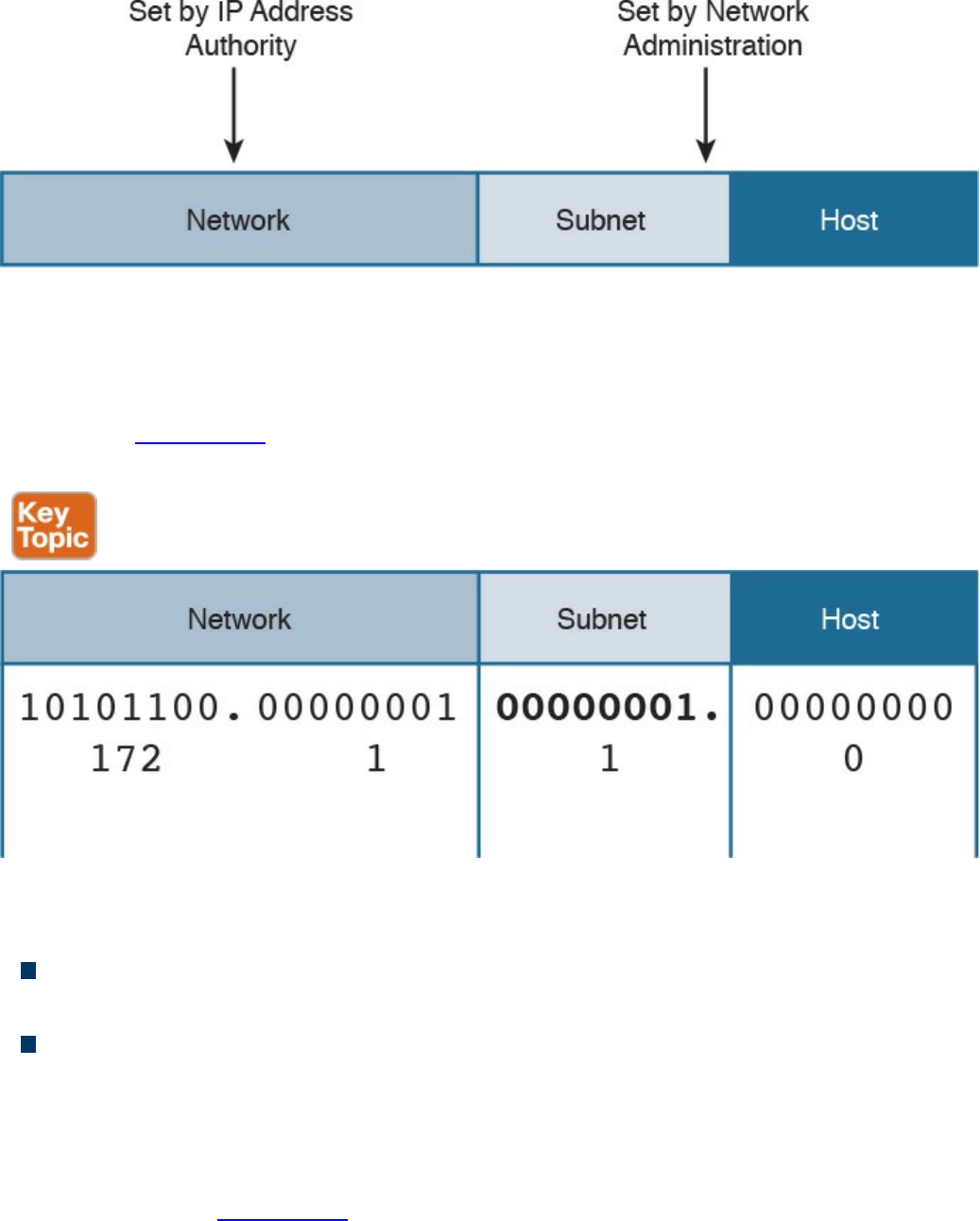

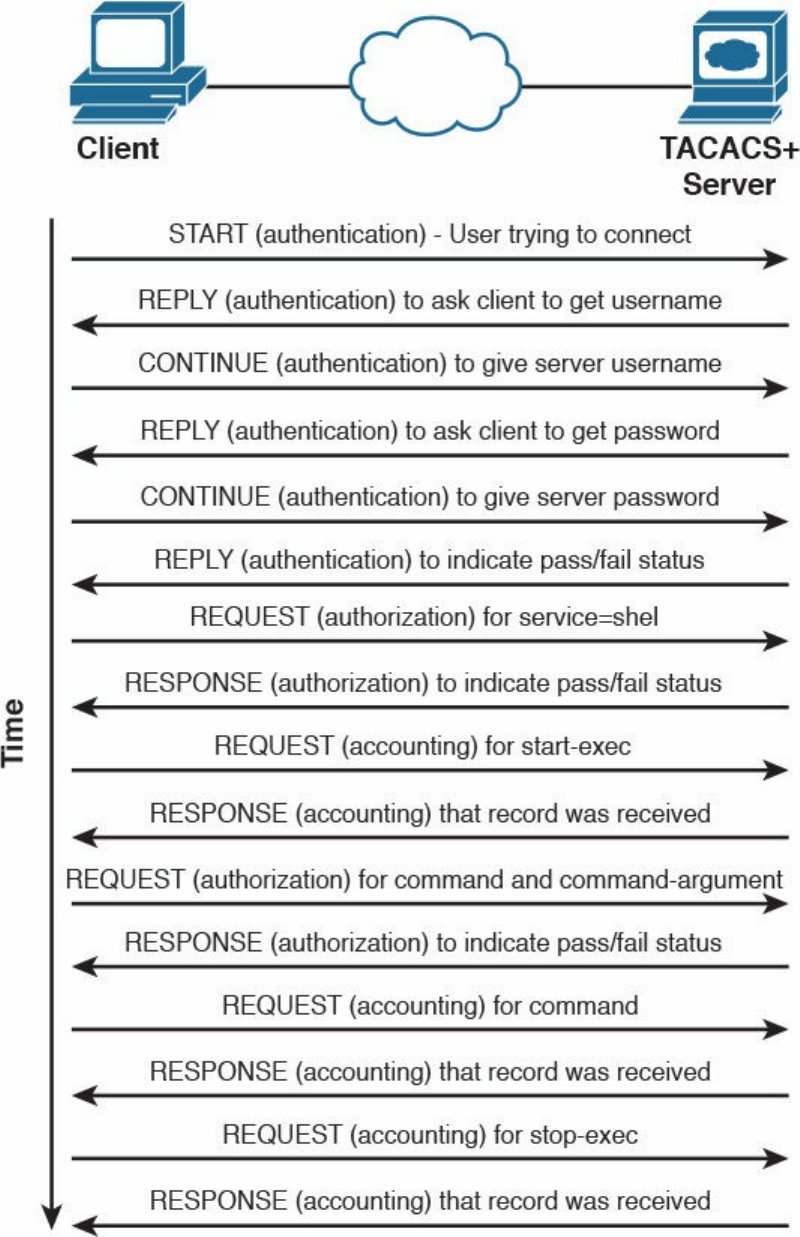

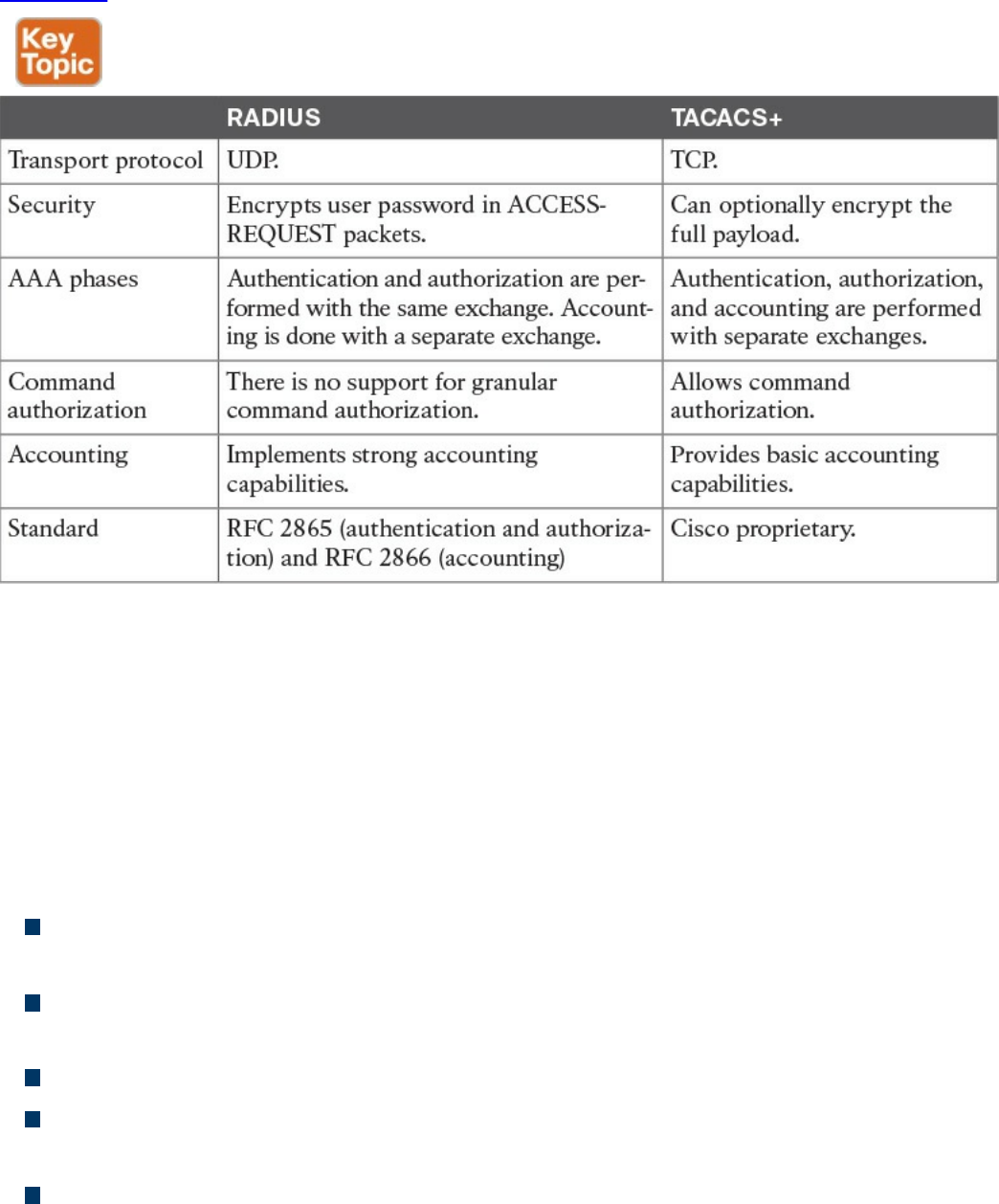

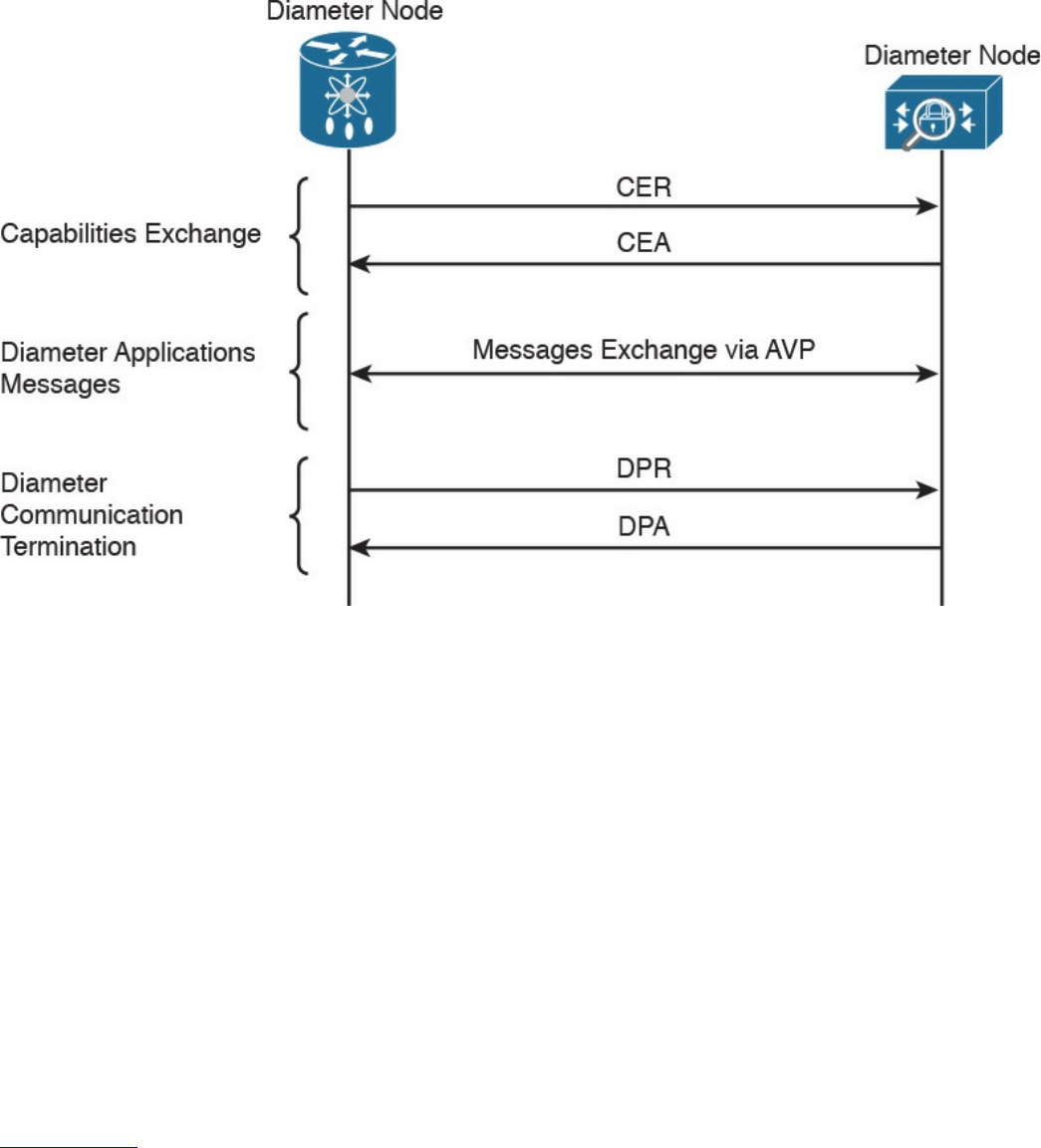

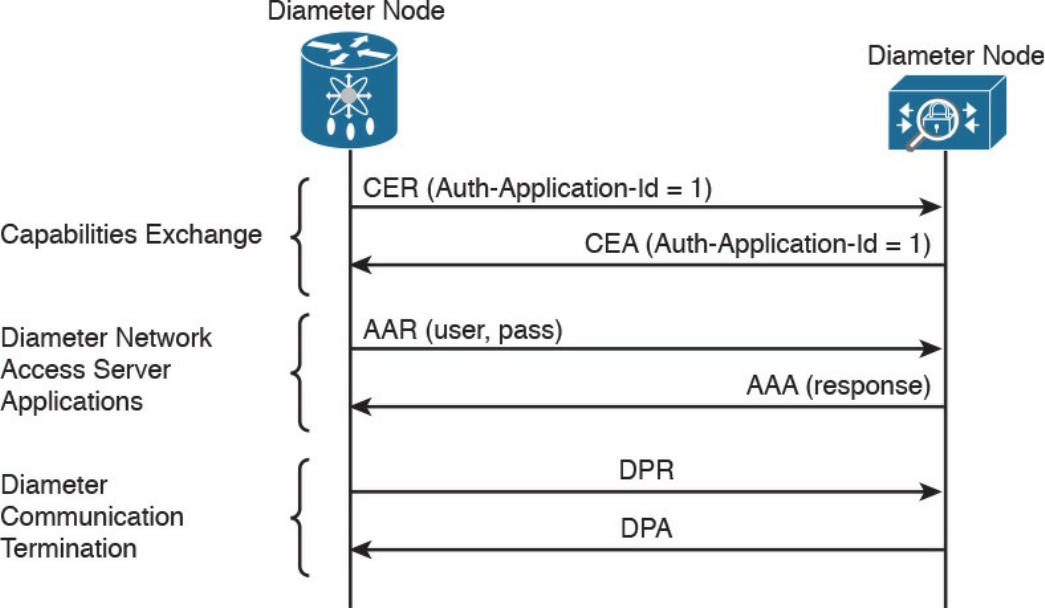

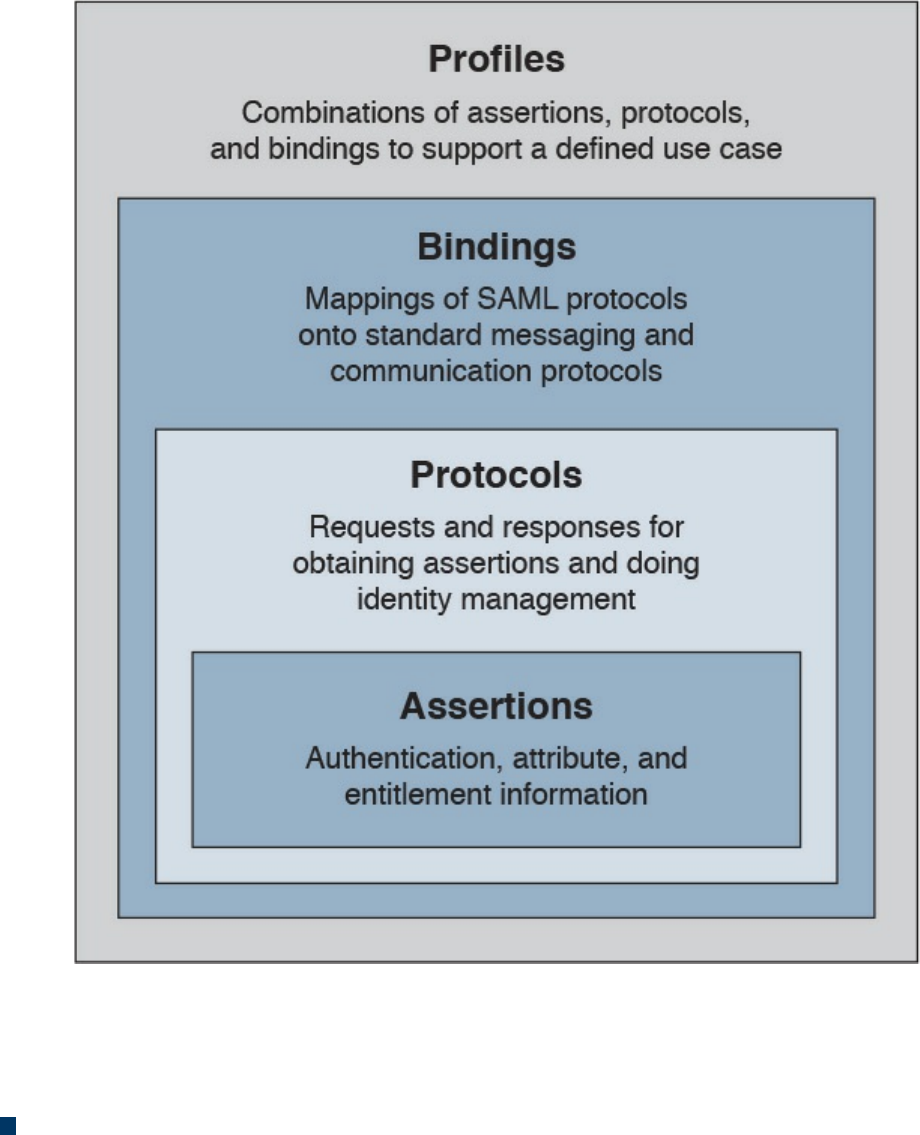

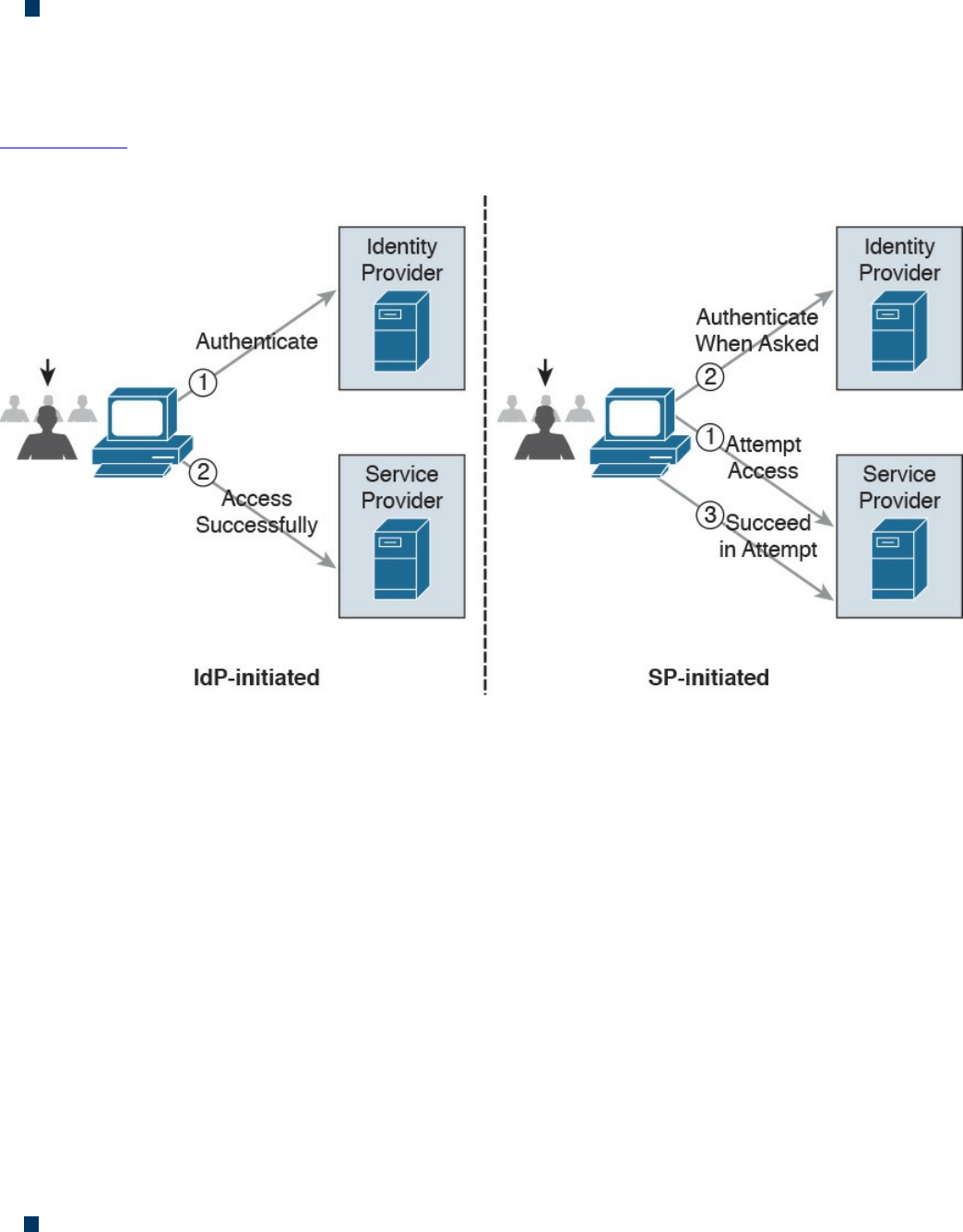

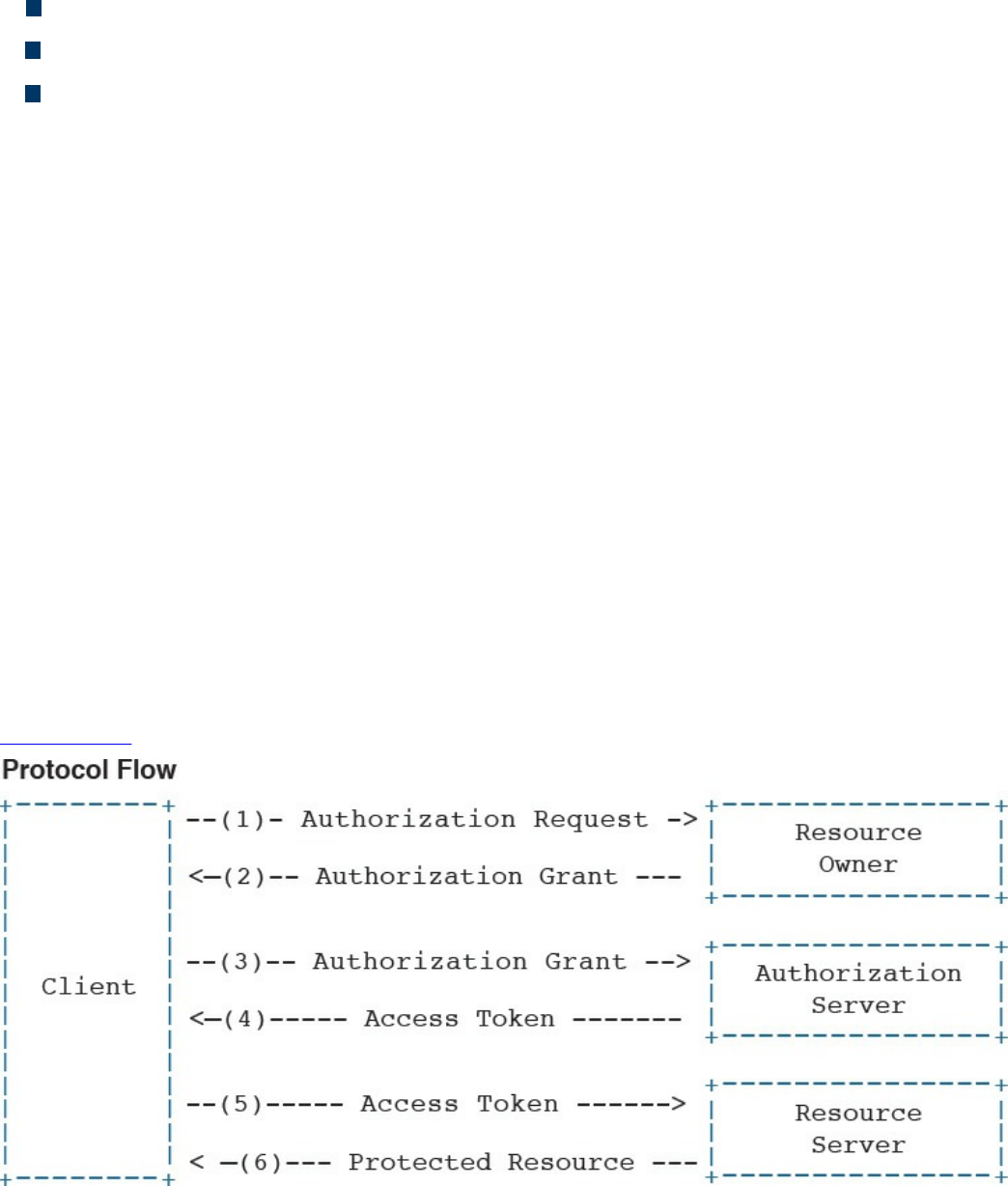

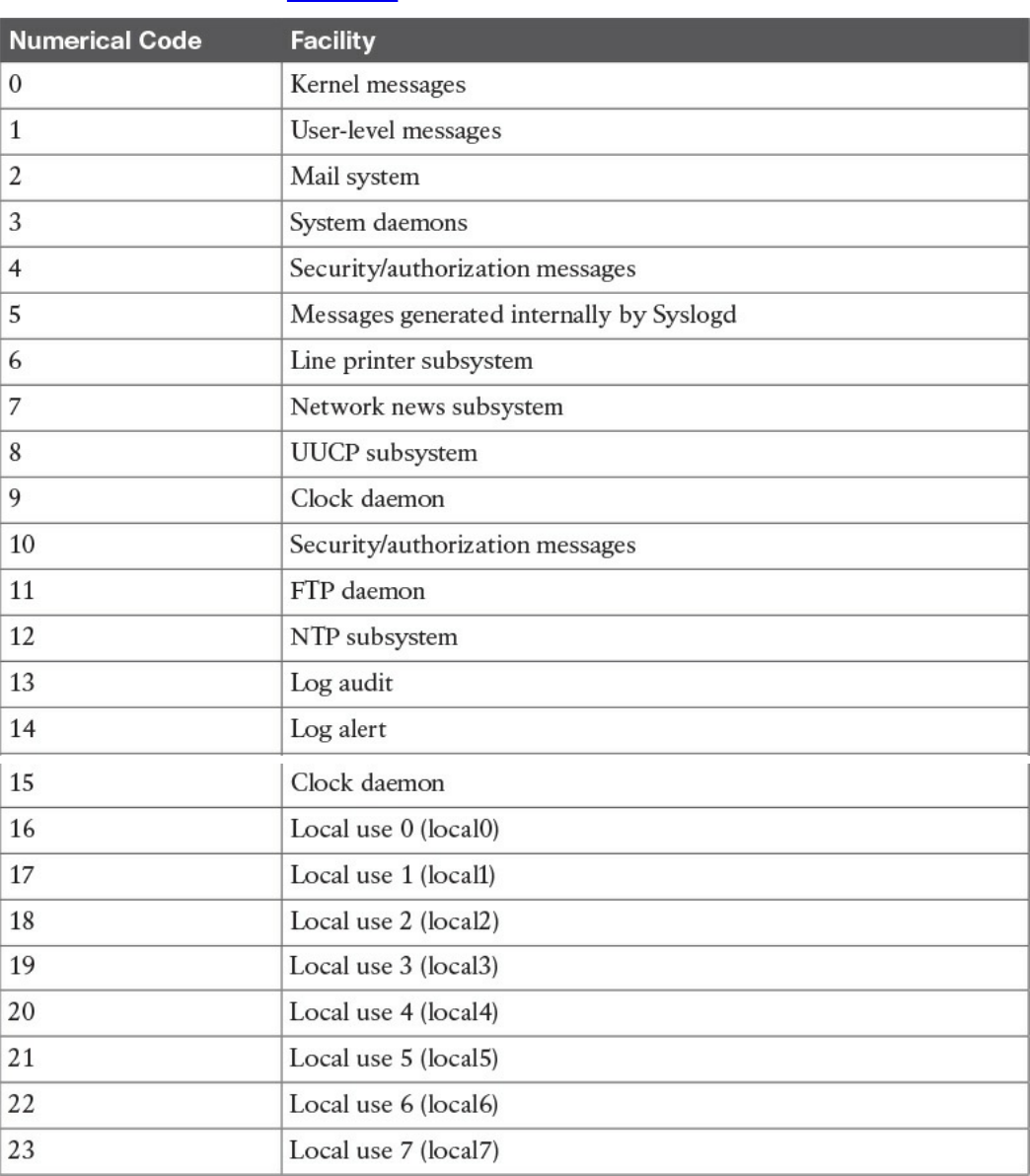

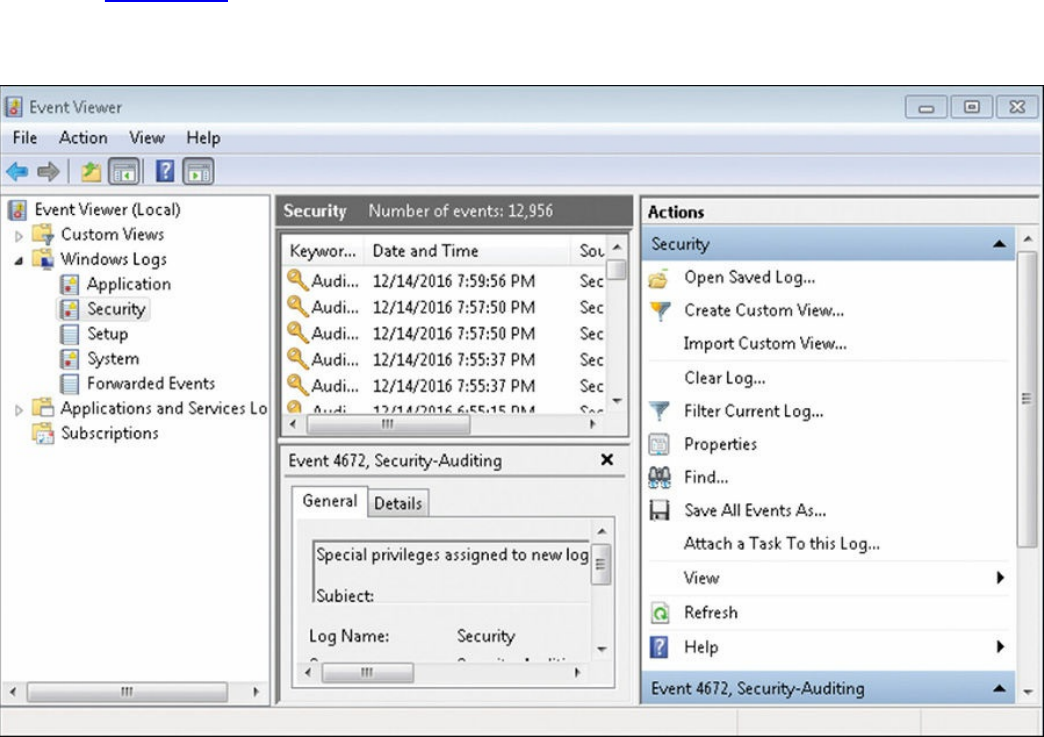

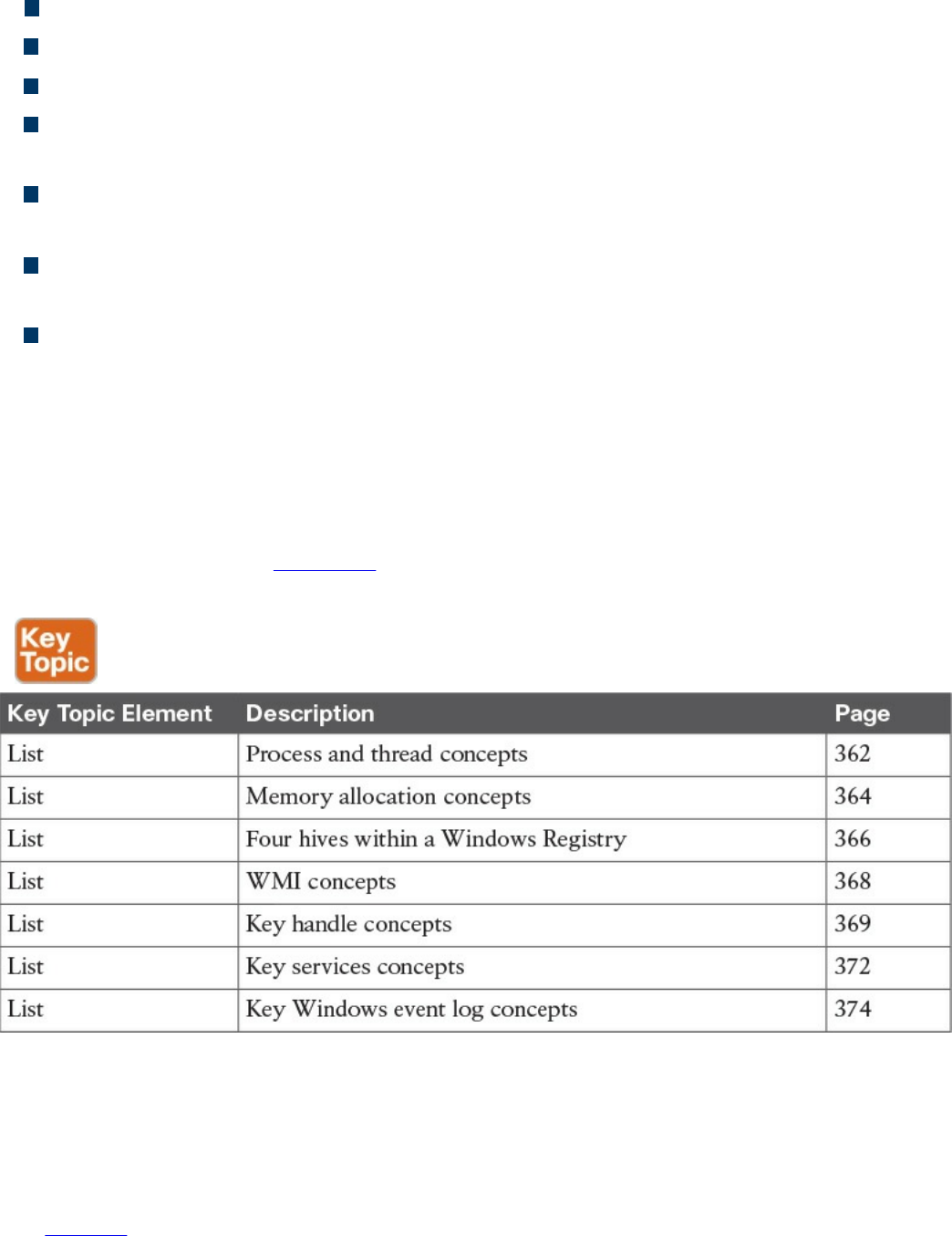

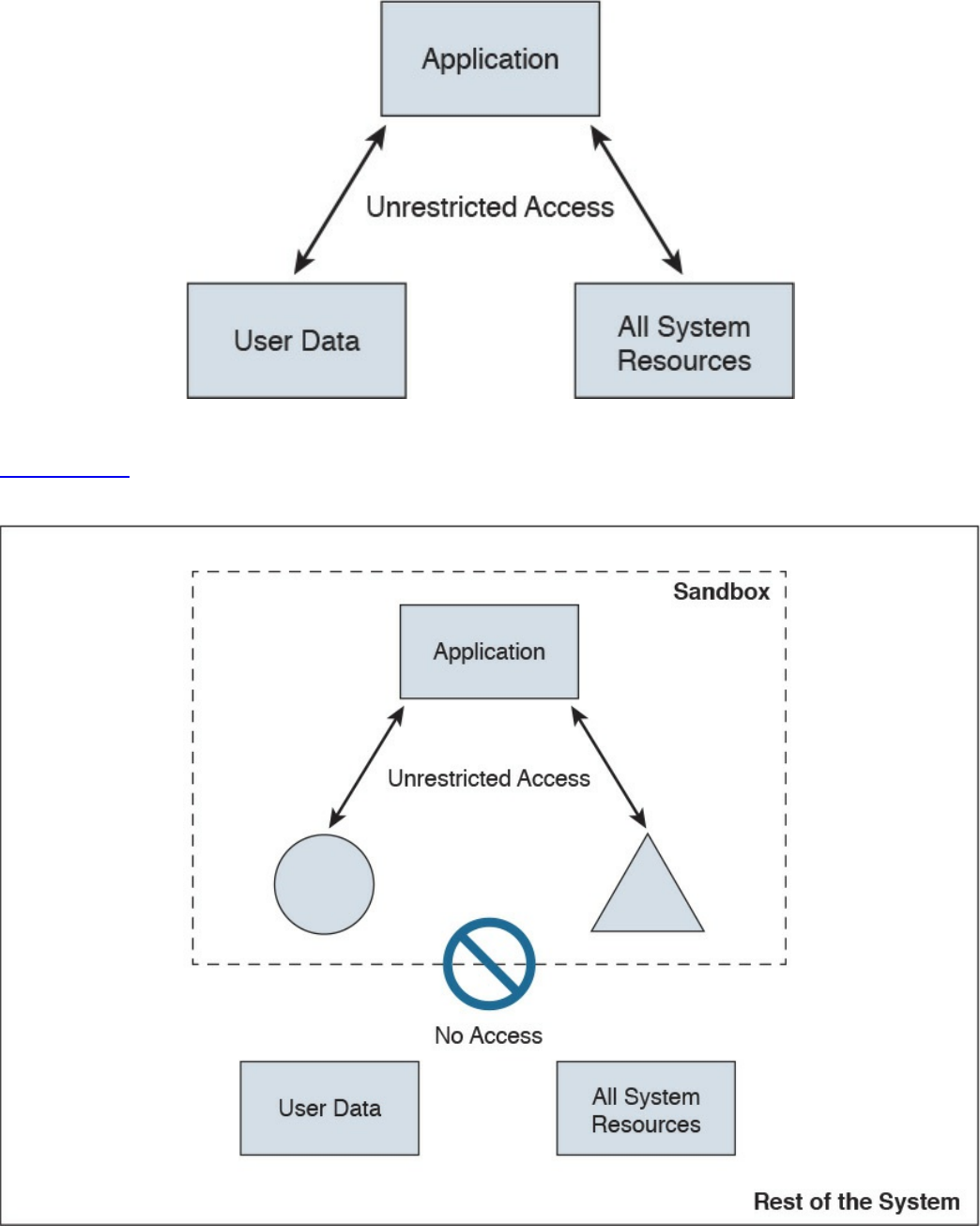

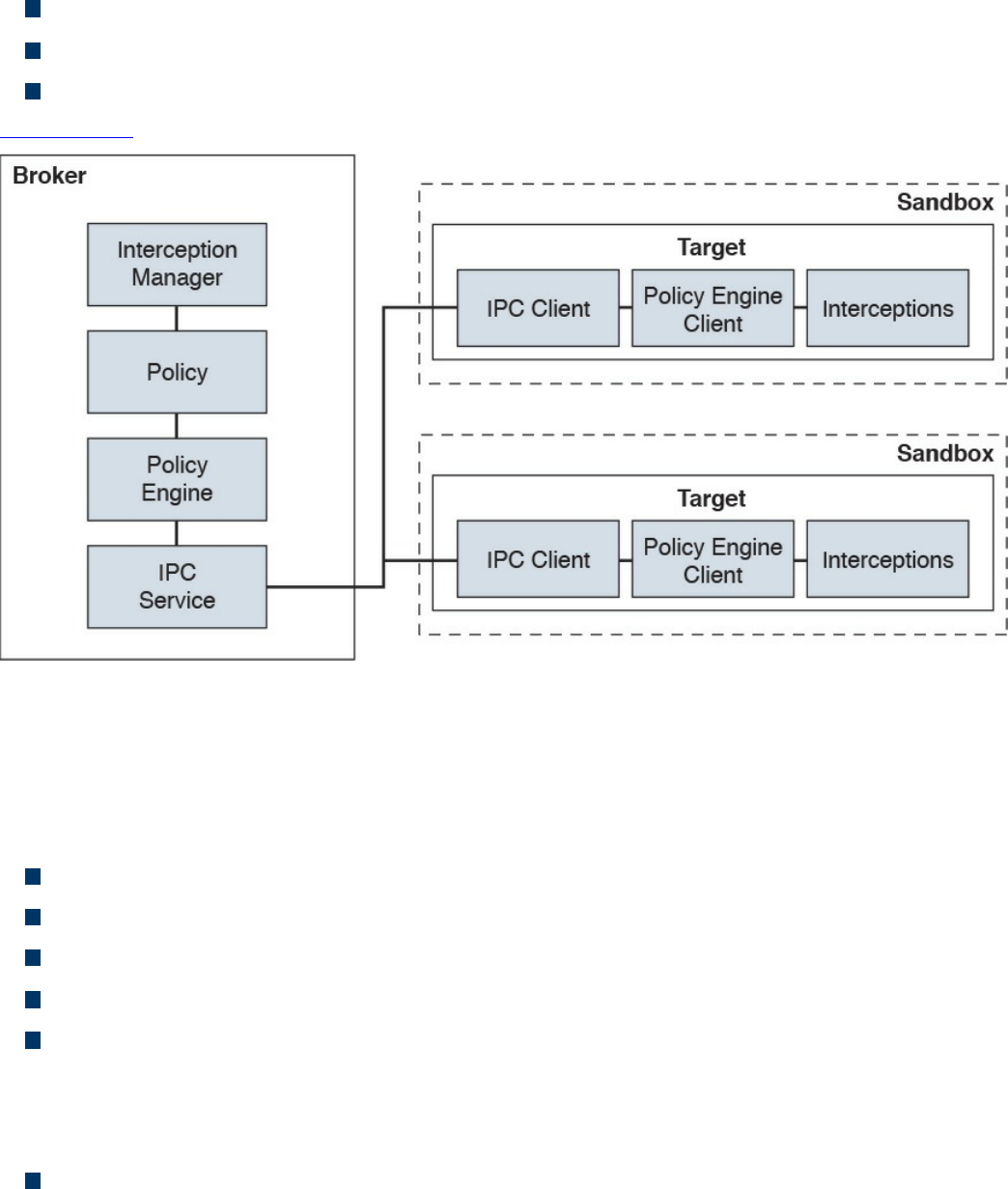

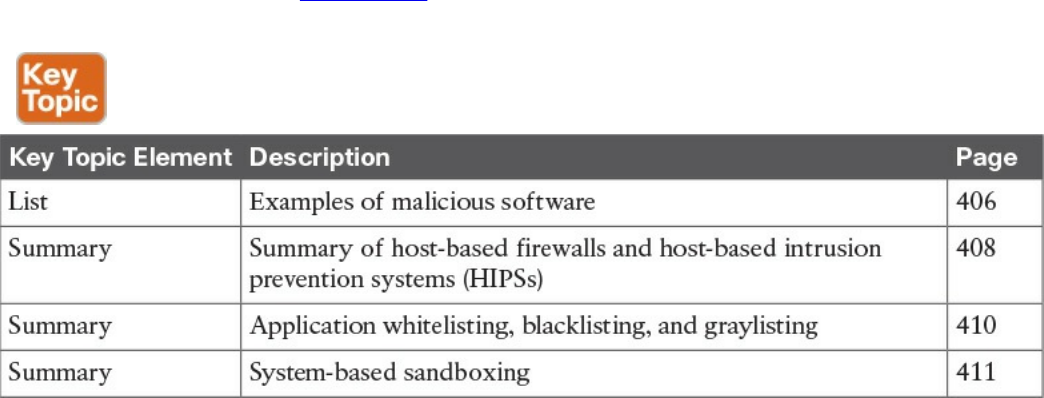

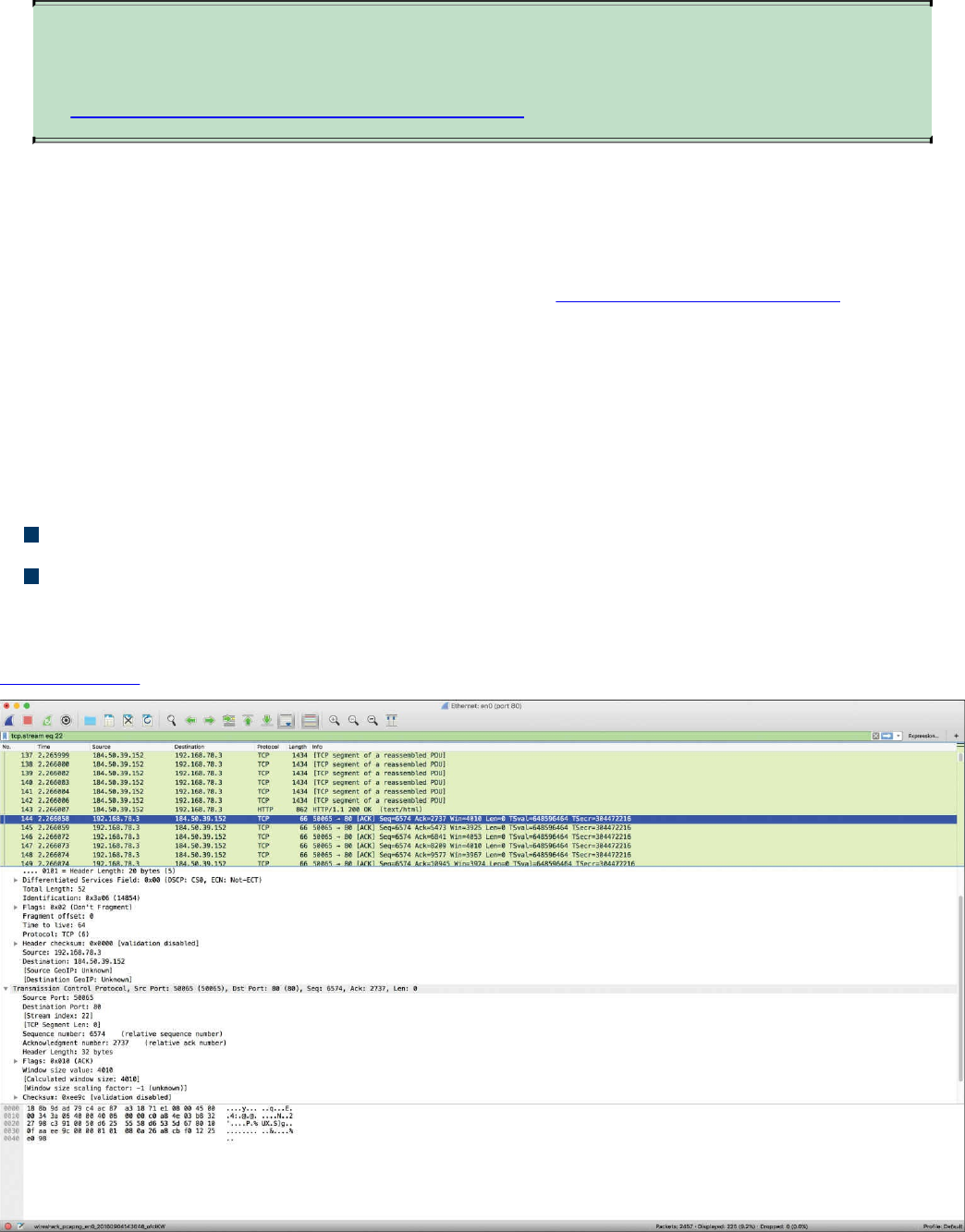

means of connection. It defines L3 addressing, routing, and packet forwarding.