Amazon Kinesis Data Streams Developer Guide 2.Dev Stream

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 171 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Amazon Kinesis Data Streams

- Table of Contents

- What Is Amazon Kinesis Data Streams?

- Getting Started Using Amazon Kinesis Data Streams

- Setting Up for Amazon Kinesis Data Streams

- Tutorial: Visualizing Web Traffic Using Amazon Kinesis Data Streams

- Tutorial: Getting Started With Amazon Kinesis Data Streams Using AWS CLI

- Tutorial: Analyzing Real-Time Stock Data Using Kinesis Data Streams

- Creating and Managing Streams

- Creating a Stream

- Listing Streams

- Listing Shards

- Retrieving Shards from a Stream

- Deleting a Stream

- Resharding a Stream

- Changing the Data Retention Period

- Tagging Your Streams in Amazon Kinesis Data Streams

- Monitoring Streams in Amazon Kinesis Data Streams

- Monitoring the Amazon Kinesis Data Streams Service with Amazon CloudWatch

- Monitoring Kinesis Data Streams Agent Health with Amazon CloudWatch

- Logging Amazon Kinesis Data Streams API Calls with AWS CloudTrail

- Monitoring the Kinesis Client Library with Amazon CloudWatch

- Monitoring the Kinesis Producer Library with Amazon CloudWatch

- Controlling Access to Amazon Kinesis Data Streams Resources Using IAM

- Using Server-Side Encryption

- Using Amazon Kinesis Data Streams with Interface VPC Endpoints

- Managing Kinesis Data Streams Using the Console

- Writing Data to Amazon Kinesis Data Streams

- Developing Producers Using the Amazon Kinesis Producer Library

- Role of the KPL

- Advantages of Using the KPL

- When Not to Use the KPL

- Installing the KPL

- Transitioning to Amazon Trust Services (ATS) Certificates for the Kinesis Producer Library

- KPL Supported Platforms

- KPL Key Concepts

- Integrating the KPL with Producer Code

- Writing to your Kinesis Data Stream Using the KPL

- Configuring the Kinesis Producer Library

- Consumer De-aggregation

- Using the KPL with Kinesis Data Firehose

- Developing Producers Using the Amazon Kinesis Data Streams API with the AWS SDK for Java

- Writing to Amazon Kinesis Data Streams Using Kinesis Agent

- Troubleshooting Amazon Kinesis Data Streams Producers

- Advanced Topics for Kinesis Data Streams Producers

- Developing Producers Using the Amazon Kinesis Producer Library

- Reading Data from Amazon Kinesis Data Streams

- Developing Amazon Kinesis Data Streams Consumers

- Developing Consumers Using the Kinesis Client Library 1.x

- Kinesis Client Library

- Role of the KCL

- Developing a Kinesis Client Library Consumer in Java

- Developing a Kinesis Client Library Consumer in Node.js

- Developing a Kinesis Client Library Consumer in .NET

- Developing a Kinesis Client Library Consumer in Python

- Developing a Kinesis Client Library Consumer in Ruby

- Developing Consumers Using the Kinesis Client Library 2.0

- Developing Consumers Using the Kinesis Data Streams API with the AWS SDK for Java

- Developing Consumers Using the Kinesis Client Library 1.x

- Using Consumers with Enhanced Fan-Out

- Migrating from Kinesis Client Library 1.x to 2.x

- Troubleshooting Amazon Kinesis Data Streams Consumers

- Some Kinesis Data Streams Records are Skipped When Using the Kinesis Client Library

- Records Belonging to the Same Shard are Processed by Different Record Processors at the Same Time

- Consumer Application is Reading at a Slower Rate Than Expected

- GetRecords Returns Empty Records Array Even When There is Data in the Stream

- Shard Iterator Expires Unexpectedly

- Consumer Record Processing Falling Behind

- Unauthorized KMS master key permission error

- Advanced Topics for Amazon Kinesis Data Streams Consumers

- Developing Amazon Kinesis Data Streams Consumers

- Document History

- AWS Glossary

Amazon Kinesis Data Streams

Developer Guide

Amazon Kinesis Data Streams Developer Guide

Amazon Kinesis Data Streams: Developer Guide

Copyright © 2019 Amazon Web Services, Inc. and/or its affiliates. All rights reserved.

Amazon's trademarks and trade dress may not be used in connection with any product or service that is not Amazon's, in any manner

that is likely to cause confusion among customers, or in any manner that disparages or discredits Amazon. All other trademarks not

owned by Amazon are the property of their respective owners, who may or may not be affiliated with, connected to, or sponsored by

Amazon.

Amazon Kinesis Data Streams Developer Guide

Table of Contents

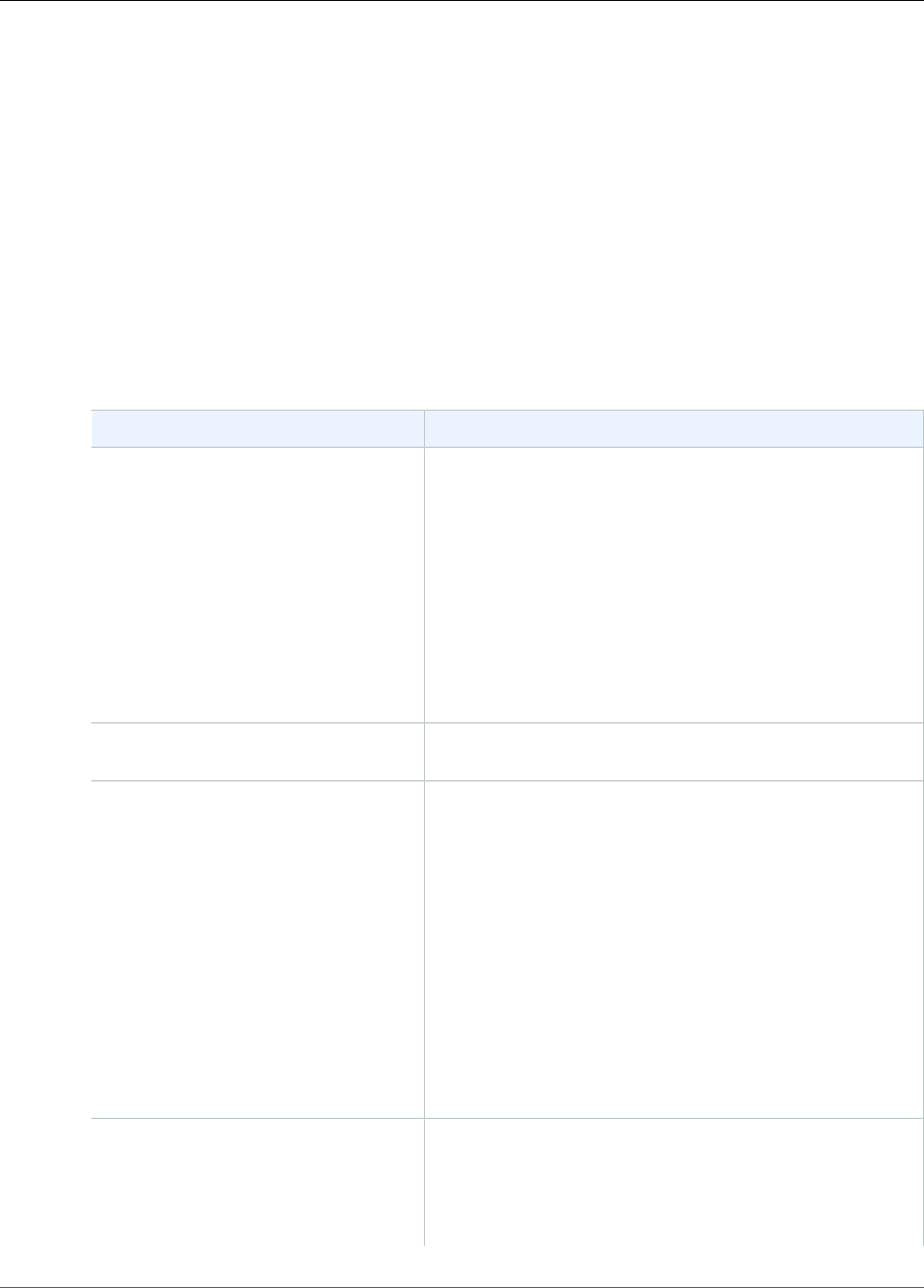

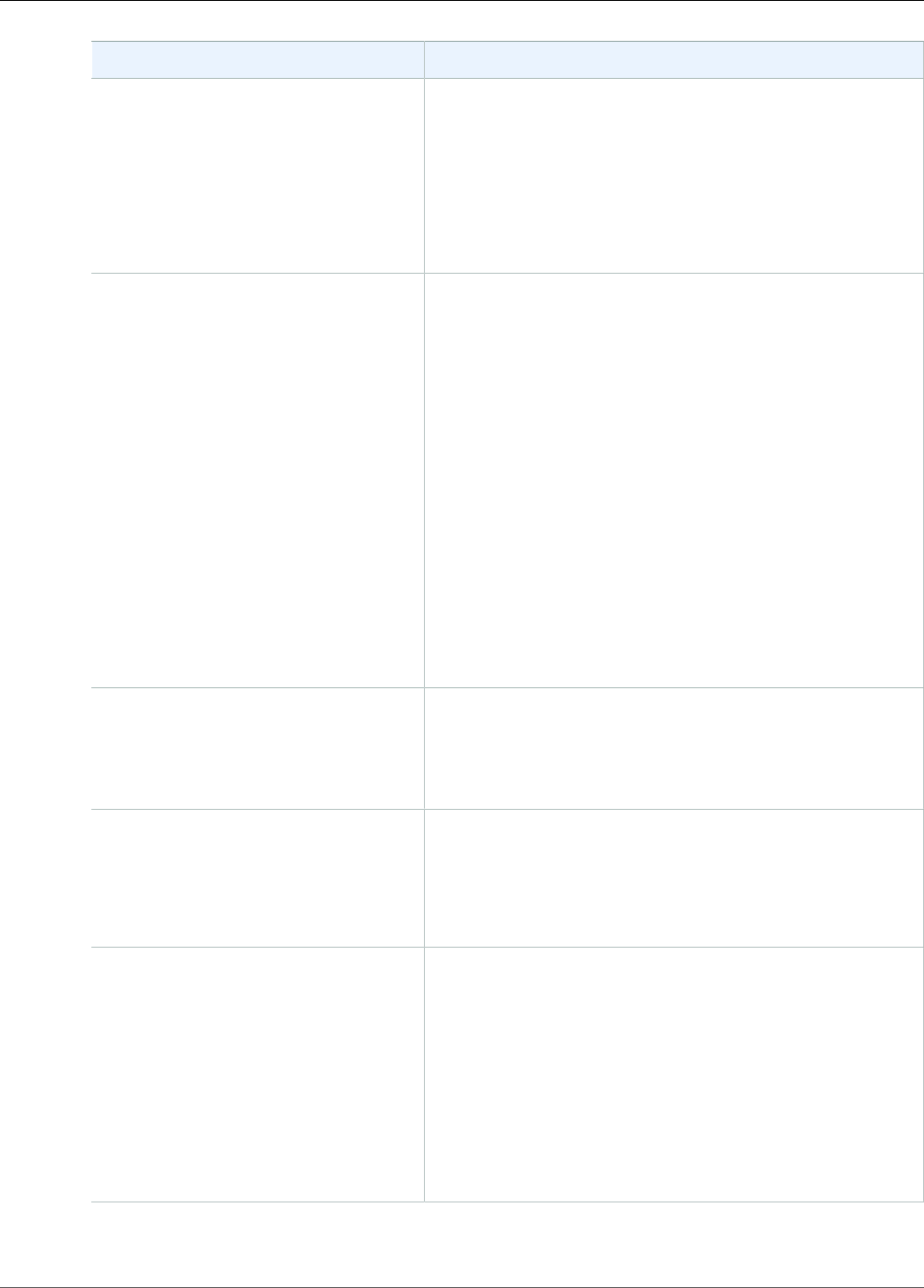

What Is Amazon Kinesis Data Streams? ................................................................................................. 1

What Can I Do with Kinesis Data Streams? .................................................................................... 1

Benefits of Using Kinesis Data Streams ......................................................................................... 2

Related Services ......................................................................................................................... 2

Key Concepts ............................................................................................................................. 2

High-level Architecture ....................................................................................................... 2

Terminology ...................................................................................................................... 3

Data Streams ............................................................................................................................. 5

Determining the Initial Size of a Kinesis Data Stream .............................................................. 5

Creating a Stream .............................................................................................................. 6

Updating a Stream ............................................................................................................. 6

Producers .................................................................................................................................. 7

Consumers ................................................................................................................................ 8

........................................................................................................................................ 8

Limits ....................................................................................................................................... 8

API limits .......................................................................................................................... 8

Increasing Limits ................................................................................................................ 9

Getting Started ................................................................................................................................ 10

Setting Up ............................................................................................................................... 10

Sign Up for AWS .............................................................................................................. 10

Download Libraries and Tools ............................................................................................ 10

Configure Your Development Environment .......................................................................... 11

Tutorial: Visualizing Web Traffic ................................................................................................. 11

Kinesis Data Streams Data Visualization Sample Application .................................................. 11

Prerequisites .................................................................................................................... 12

Step 1: Start the Sample Application .................................................................................. 12

Step 2: View the Components of the Sample Application ...................................................... 13

Step 3: Delete Sample Application ..................................................................................... 16

Step 4: Next Steps ........................................................................................................... 16

Tutorial: Getting Started Using the CLI ....................................................................................... 17

Install and Configure the AWS CLI ...................................................................................... 17

Perform Basic Stream Operations ....................................................................................... 19

Tutorial: Analyzing Real-Time Stock Data .................................................................................... 23

Prerequisites .................................................................................................................... 24

Step 1: Create a Data Stream ............................................................................................ 25

Step 2: Create an IAM Policy and User ................................................................................ 26

Step 3: Download and Build the Implementation Code .......................................................... 29

Step 4: Implement the Producer ........................................................................................ 30

Step 5: Implement the Consumer ....................................................................................... 32

Step 6: (Optional) Extending the Consumer ......................................................................... 35

Step 7: Finishing Up ......................................................................................................... 36

Creating and Managing Streams ......................................................................................................... 38

Creating a Stream .................................................................................................................... 38

Build the Kinesis Data Streams Client ................................................................................. 38

Create the Stream ............................................................................................................ 39

Listing Streams ........................................................................................................................ 40

Listing Shards .......................................................................................................................... 41

Retrieving Shards from a Stream ................................................................................................ 42

Deleting a Stream .................................................................................................................... 42

Resharding a Stream ................................................................................................................. 42

Strategies for Resharding .................................................................................................. 43

Splitting a Shard .............................................................................................................. 44

Merging Two Shards ......................................................................................................... 45

After Resharding .............................................................................................................. 46

iii

Amazon Kinesis Data Streams Developer Guide

Changing the Data Retention Period ........................................................................................... 47

Tagging Your Streams ............................................................................................................... 48

Tag Basics ....................................................................................................................... 48

Tracking Costs Using Tagging ............................................................................................ 49

Tag Restrictions ................................................................................................................ 49

Tagging Streams Using the Kinesis Data Streams Console ...................................................... 49

Tagging Streams Using the AWS CLI ................................................................................... 50

Tagging Streams Using the Kinesis Data Streams API ............................................................ 50

Monitoring Streams .................................................................................................................. 51

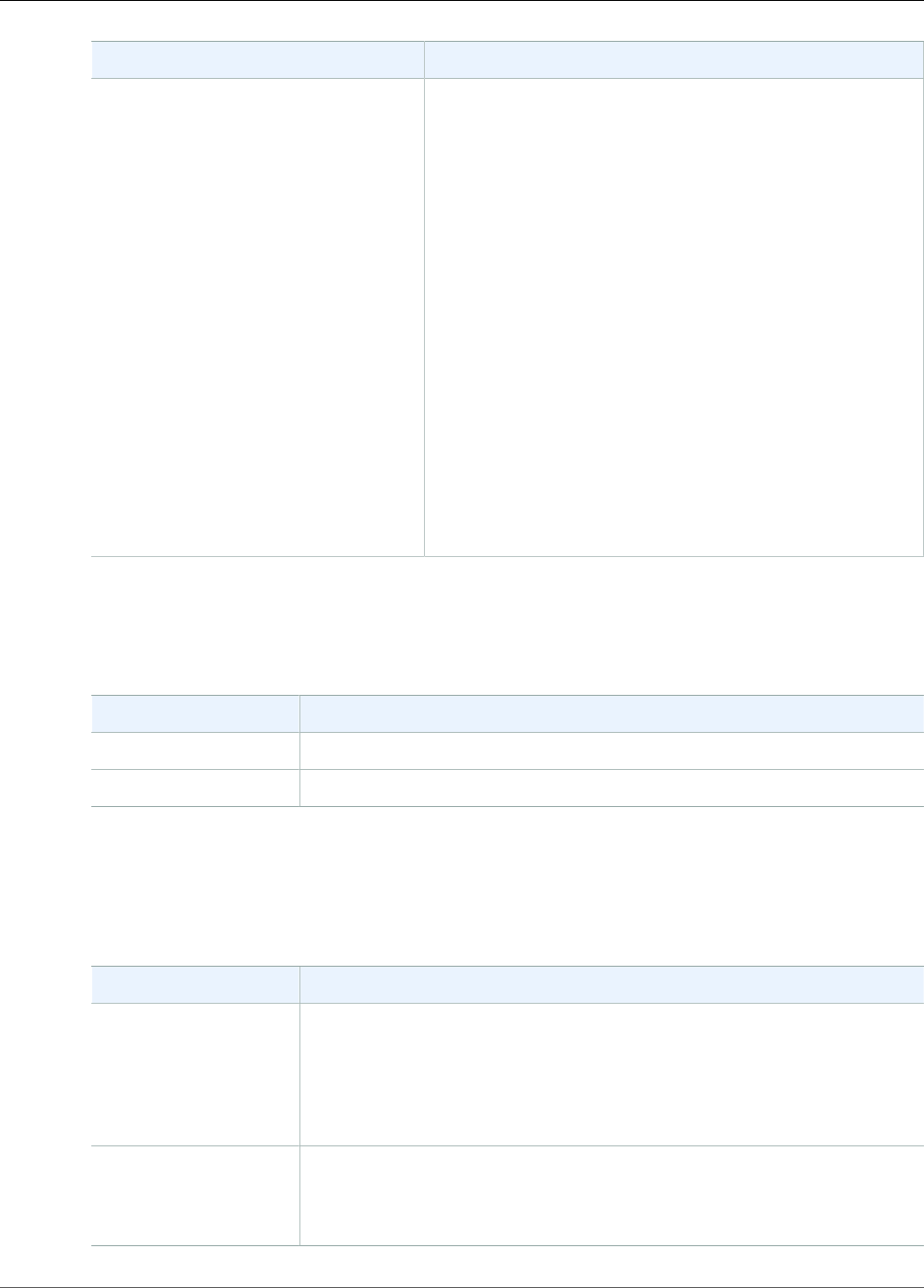

Monitoring the Service with CloudWatch ............................................................................. 51

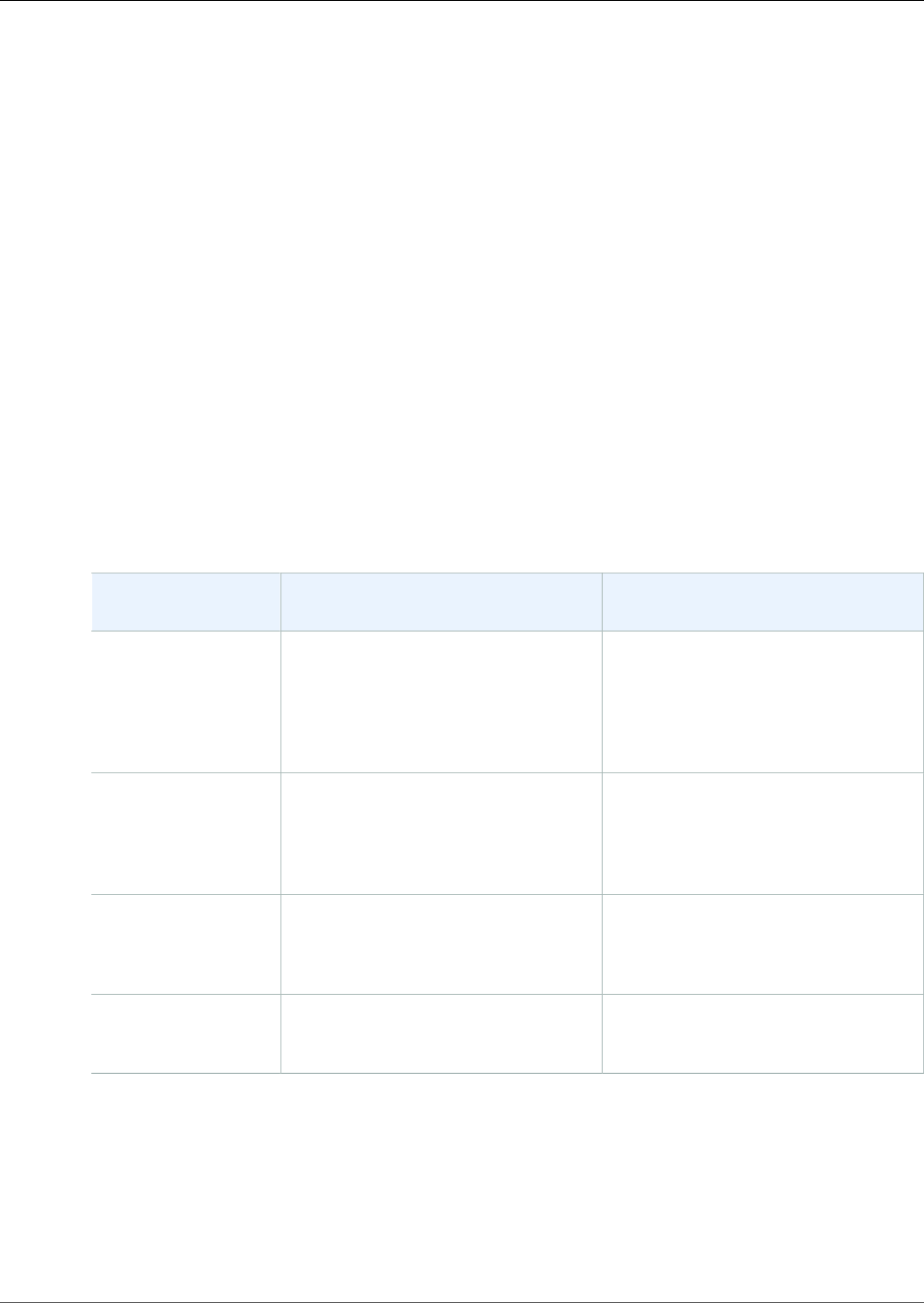

Monitoring the Agent with CloudWatch .............................................................................. 60

Logging Amazon Kinesis Data Streams API Calls with AWS CloudTrail ...................................... 61

Monitoring the KCL with CloudWatch ................................................................................. 65

Monitoring the KPL with CloudWatch ................................................................................. 73

Controlling Access .................................................................................................................... 77

Policy Syntax ................................................................................................................... 78

Actions for Kinesis Data Streams ........................................................................................ 78

Amazon Resource Names (ARNs) for Kinesis Data Streams ..................................................... 79

Example Policies for Kinesis Data Streams ........................................................................... 79

Using Server-Side Encryption ..................................................................................................... 80

What Is Server-Side Encryption for Kinesis Data Streams? ...................................................... 81

Costs, Regions, and Performance Considerations .................................................................. 81

How Do I Get Started with Server-Side Encryption? .............................................................. 82

Creating and Using User-Generated KMS Master Keys ........................................................... 83

Permissions to Use User-Generated KMS Master Keys ........................................................... 84

Verifying and Troubleshooting KMS Key Permissions ............................................................. 85

Using Interface VPC Endpoints ................................................................................................... 85

Interface VPC endpoints for Kinesis Data Streams ................................................................ 85

Using interface VPC endpoints for Kinesis Data Streams ........................................................ 85

Availability ....................................................................................................................... 86

Managing Streams Using the Console ......................................................................................... 86

Writing Data to Streams ................................................................................................................... 88

Using the KPL .......................................................................................................................... 88

Role of the KPL ............................................................................................................... 89

Advantages of Using the KPL ............................................................................................. 89

When Not to Use the KPL ................................................................................................. 90

Installing the KPL ............................................................................................................. 90

Transitioning to Amazon Trust Services (ATS) Certificates for the Kinesis Producer Library ........... 90

KPL Supported Platforms .................................................................................................. 90

KPL Key Concepts ............................................................................................................. 91

Integrating the KPL with Producer Code .............................................................................. 92

Writing to your Kinesis data stream .................................................................................... 94

Configuring the KPL ......................................................................................................... 95

Consumer De-aggregation ................................................................................................. 96

Using the KPL with Kinesis Data Firehose ............................................................................ 98

Using the API .......................................................................................................................... 98

Adding Data to a Stream .................................................................................................. 98

Using the Agent ..................................................................................................................... 102

Prerequisites .................................................................................................................. 103

Download and Install the Agent ....................................................................................... 103

Configure and Start the Agent ......................................................................................... 104

Agent Configuration Settings ........................................................................................... 104

Monitor Multiple File Directories and Write to Multiple Streams ............................................ 107

Use the Agent to Pre-process Data ................................................................................... 107

Agent CLI Commands ...................................................................................................... 110

Troubleshooting ..................................................................................................................... 111

Producer Application is Writing at a Slower Rate Than Expected ........................................... 111

iv

Amazon Kinesis Data Streams Developer Guide

Unauthorized KMS master key permission error .................................................................. 112

Advanced Topics ..................................................................................................................... 112

Retries and Rate Limiting ................................................................................................ 112

Considerations When Using KPL Aggregation ..................................................................... 113

Reading Data from Streams ............................................................................................................. 115

Using Consumers .................................................................................................................... 116

Using the Kinesis Client Library 1.x ................................................................................... 116

Using the Kinesis Client Library 2.0 ................................................................................... 130

Using the API ................................................................................................................. 134

Using Consumers with Enhanced Fan-Out .................................................................................. 138

Using the Kinesis Client Library 2.0 ................................................................................... 139

Using the API ................................................................................................................. 143

Using the AWS Management Console ................................................................................ 144

Migrating from Kinesis Client Library 1.x to 2.x .......................................................................... 145

Migrating the Record Processor ........................................................................................ 145

Migrating the Record Processor Factory ............................................................................. 149

Migrating the Worker ...................................................................................................... 149

Configuring the Amazon Kinesis Client .............................................................................. 150

Idle Time Removal .......................................................................................................... 153

Client Configuration Removals ......................................................................................... 153

Troubleshooting ..................................................................................................................... 154

Some Kinesis Data Streams Records are Skipped When Using the Kinesis Client Library ............ 154

Records Belonging to the Same Shard are Processed by Different Record Processors at the

Same Time .................................................................................................................... 154

Consumer Application is Reading at a Slower Rate Than Expected ......................................... 155

GetRecords Returns Empty Records Array Even When There is Data in the Stream .................... 155

Shard Iterator Expires Unexpectedly .................................................................................. 156

Consumer Record Processing Falling Behind ....................................................................... 156

Unauthorized KMS master key permission error .................................................................. 157

Advanced Topics ..................................................................................................................... 157

Tracking State ................................................................................................................ 157

Low-Latency Processing ................................................................................................... 158

Using AWS Lambda with the Kinesis Producer Library ......................................................... 159

Resharding, Scaling, and Parallel Processing ....................................................................... 159

Handling Duplicate Records ............................................................................................. 160

Recovering from Failures ................................................................................................. 161

Handling Startup, Shutdown, and Throttling ...................................................................... 162

Document History .......................................................................................................................... 164

AWS Glossary ................................................................................................................................. 166

v

Amazon Kinesis Data Streams Developer Guide

What Can I Do with Kinesis Data Streams?

What Is Amazon Kinesis Data

Streams?

You can use Amazon Kinesis Data Streams to collect and process large streams of data records in real

time. You can create data-processing applications, known as Kinesis Data Streams applications. A typical

Kinesis Data Streams application reads data from a data stream as data records. These applications can

use the Kinesis Client Library, and they can run on Amazon EC2 instances. You can send the processed

records to dashboards, use them to generate alerts, dynamically change pricing and advertising

strategies, or send data to a variety of other AWS services. For information about Kinesis Data Streams

features and pricing, see Amazon Kinesis Data Streams.

Kinesis Data Streams is part of the Kinesis streaming data platform, along with Kinesis Data Firehose,

Kinesis Video Streams, and Kinesis Data Analytics.

For more information about AWS big data solutions, see Big Data on AWS. For more information about

AWS streaming data solutions, see What is Streaming Data?.

Topics

•What Can I Do with Kinesis Data Streams? (p. 1)

•Benefits of Using Kinesis Data Streams (p. 2)

•Related Services (p. 2)

•Kinesis Data Streams Key Concepts (p. 2)

•Creating and Updating Data Streams (p. 5)

•Kinesis Data Streams Producers (p. 7)

•Kinesis Data Streams Consumers (p. 8)

•Kinesis Data Streams Limits (p. 8)

What Can I Do with Kinesis Data Streams?

You can use Kinesis Data Streams for rapid and continuous data intake and aggregation. The type of

data used can include IT infrastructure log data, application logs, social media, market data feeds, and

web clickstream data. Because the response time for the data intake and processing is in real time, the

processing is typically lightweight.

The following are typical scenarios for using Kinesis Data Streams:

Accelerated log and data feed intake and processing

You can have producers push data directly into a stream. For example, push system and application

logs and they are available for processing in seconds. This prevents the log data from being lost if

the front end or application server fails. Kinesis Data Streams provides accelerated data feed intake

because you don't batch the data on the servers before you submit it for intake.

Real-time metrics and reporting

You can use data collected into Kinesis Data Streams for simple data analysis and reporting in real

time. For example, your data-processing application can work on metrics and reporting for system

and application logs as the data is streaming in, rather than wait to receive batches of data.

1

Amazon Kinesis Data Streams Developer Guide

Benefits of Using Kinesis Data Streams

Real-time data analytics

This combines the power of parallel processing with the value of real-time data. For example,

process website clickstreams in real time, and then analyze site usability engagement using multiple

different Kinesis Data Streams applications running in parallel.

Complex stream processing

You can create Directed Acyclic Graphs (DAGs) of Kinesis Data Streams applications and data

streams. This typically involves putting data from multiple Kinesis Data Streams applications into

another stream for downstream processing by a different Kinesis Data Streams application.

Benefits of Using Kinesis Data Streams

Although you can use Kinesis Data Streams to solve a variety of streaming data problems, a common use

is the real-time aggregation of data followed by loading the aggregate data into a data warehouse or

map-reduce cluster.

Data is put into Kinesis data streams, which ensures durability and elasticity. The delay between the time

a record is put into the stream and the time it can be retrieved (put-to-get delay) is typically less than 1

second. In other words, a Kinesis Data Streams application can start consuming the data from the stream

almost immediately after the data is added. The managed service aspect of Kinesis Data Streams relieves

you of the operational burden of creating and running a data intake pipeline. You can create streaming

map-reduce–type applications. The elasticity of Kinesis Data Streams enables you to scale the stream up

or down, so that you never lose data records before they expire.

Multiple Kinesis Data Streams applications can consume data from a stream, so that multiple actions, like

archiving and processing, can take place concurrently and independently. For example, two applications

can read data from the same stream. The first application calculates running aggregates and updates an

Amazon DynamoDB table, and the second application compresses and archives data to a data store like

Amazon Simple Storage Service (Amazon S3). The DynamoDB table with running aggregates is then read

by a dashboard for up-to-the-minute reports.

The Kinesis Client Library enables fault-tolerant consumption of data from streams and provides scaling

support for Kinesis Data Streams applications.

Related Services

For information about using Amazon EMR clusters to read and process Kinesis data streams directly, see

Kinesis Connector.

Kinesis Data Streams Key Concepts

As you get started with Amazon Kinesis Data Streams, you can benefit from understanding its

architecture and terminology.

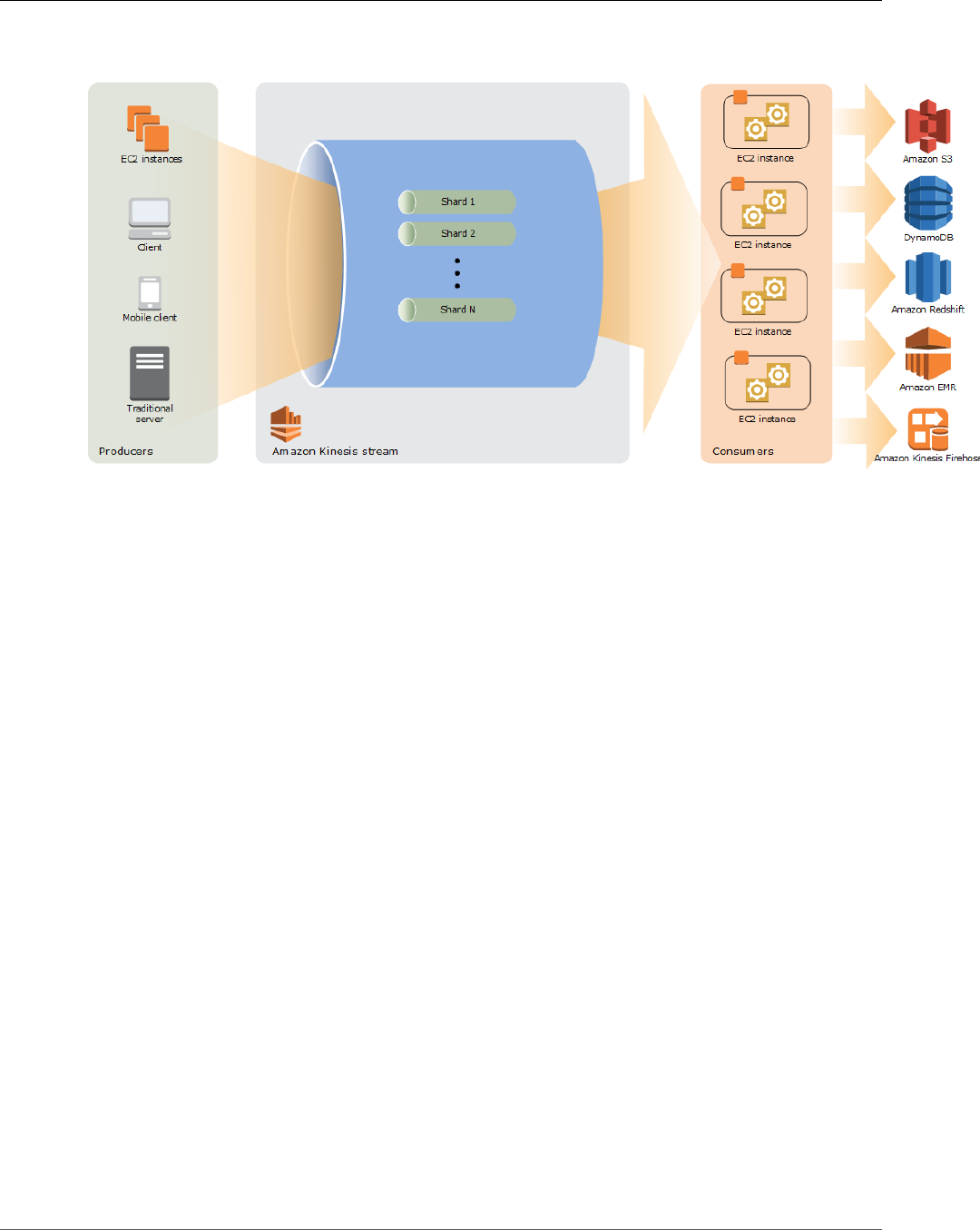

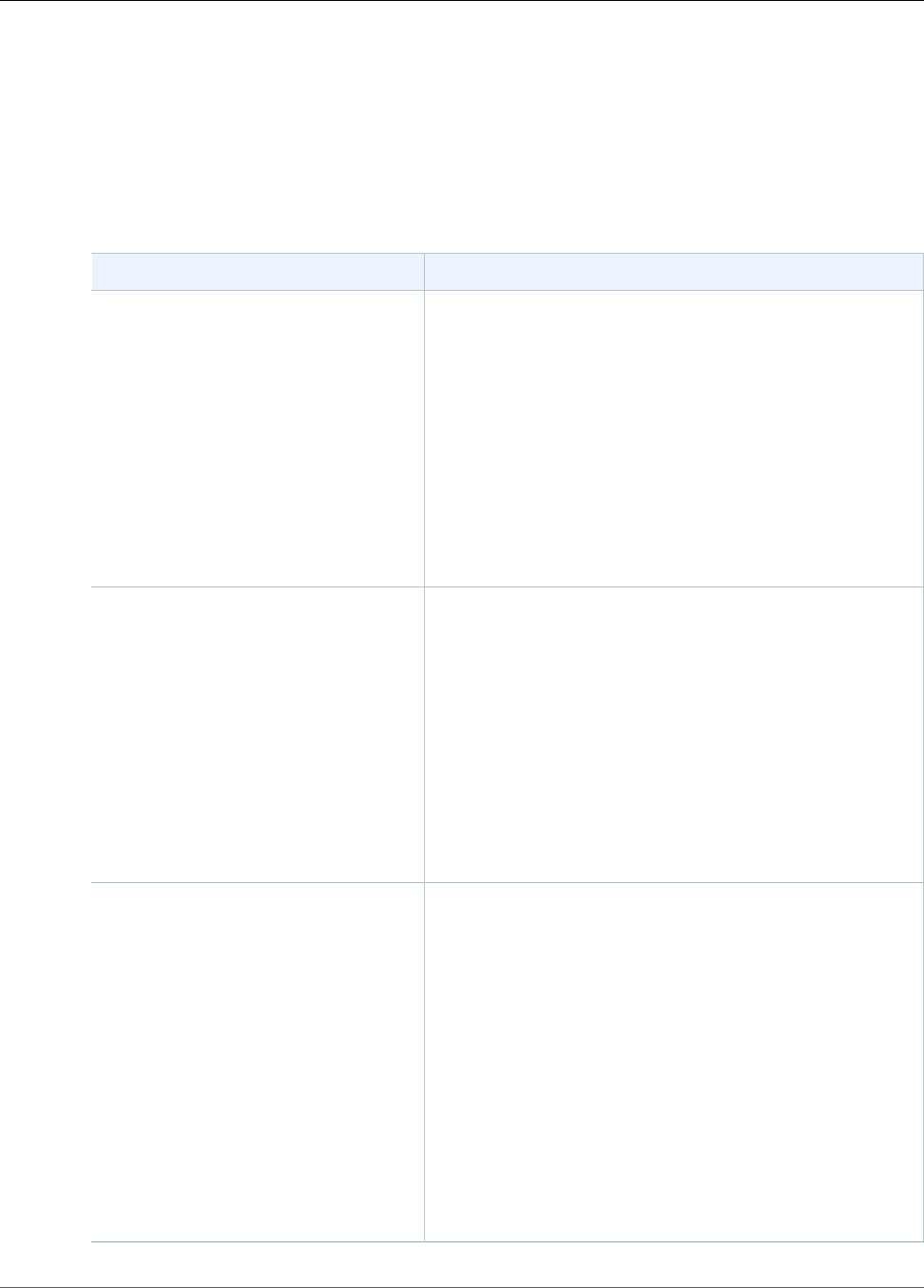

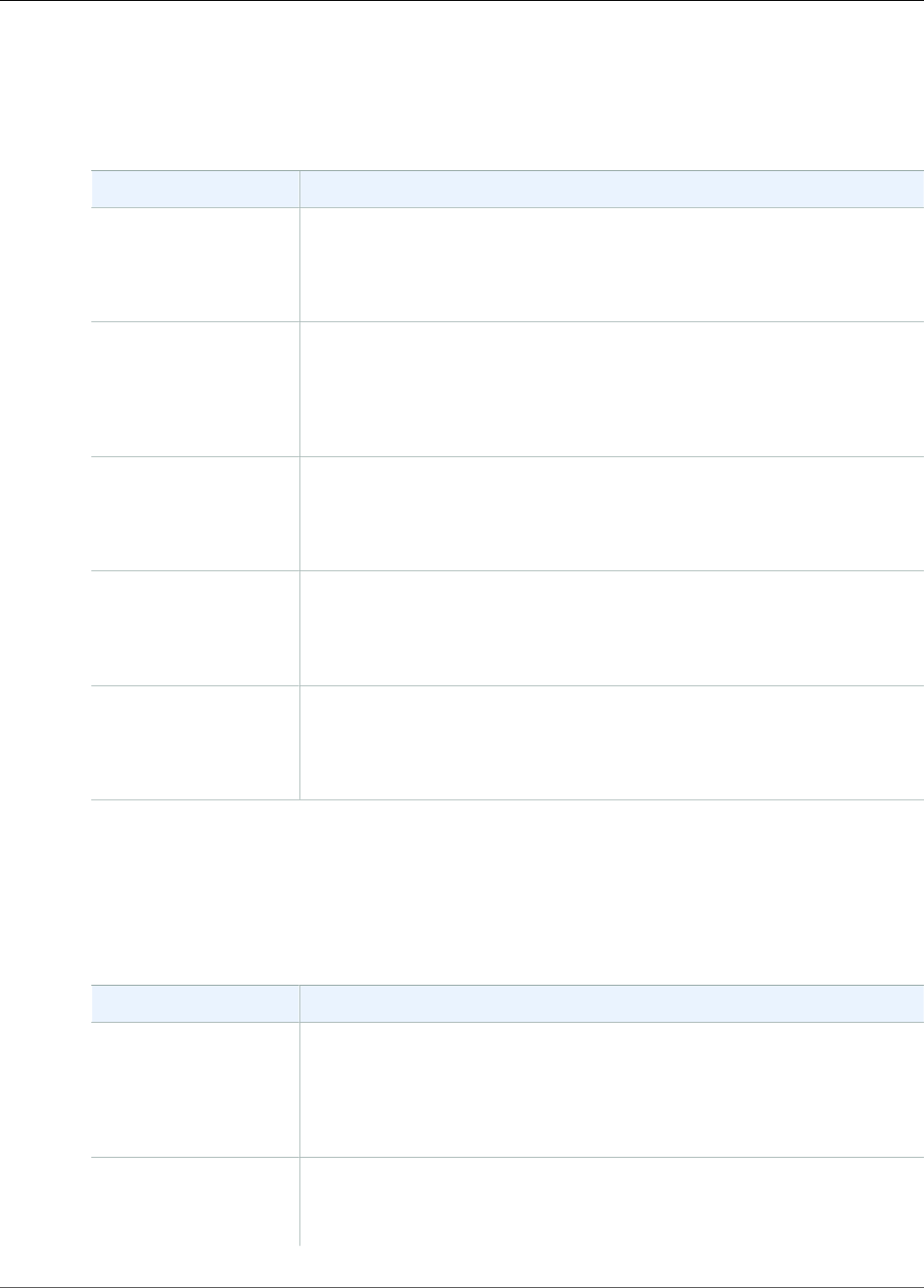

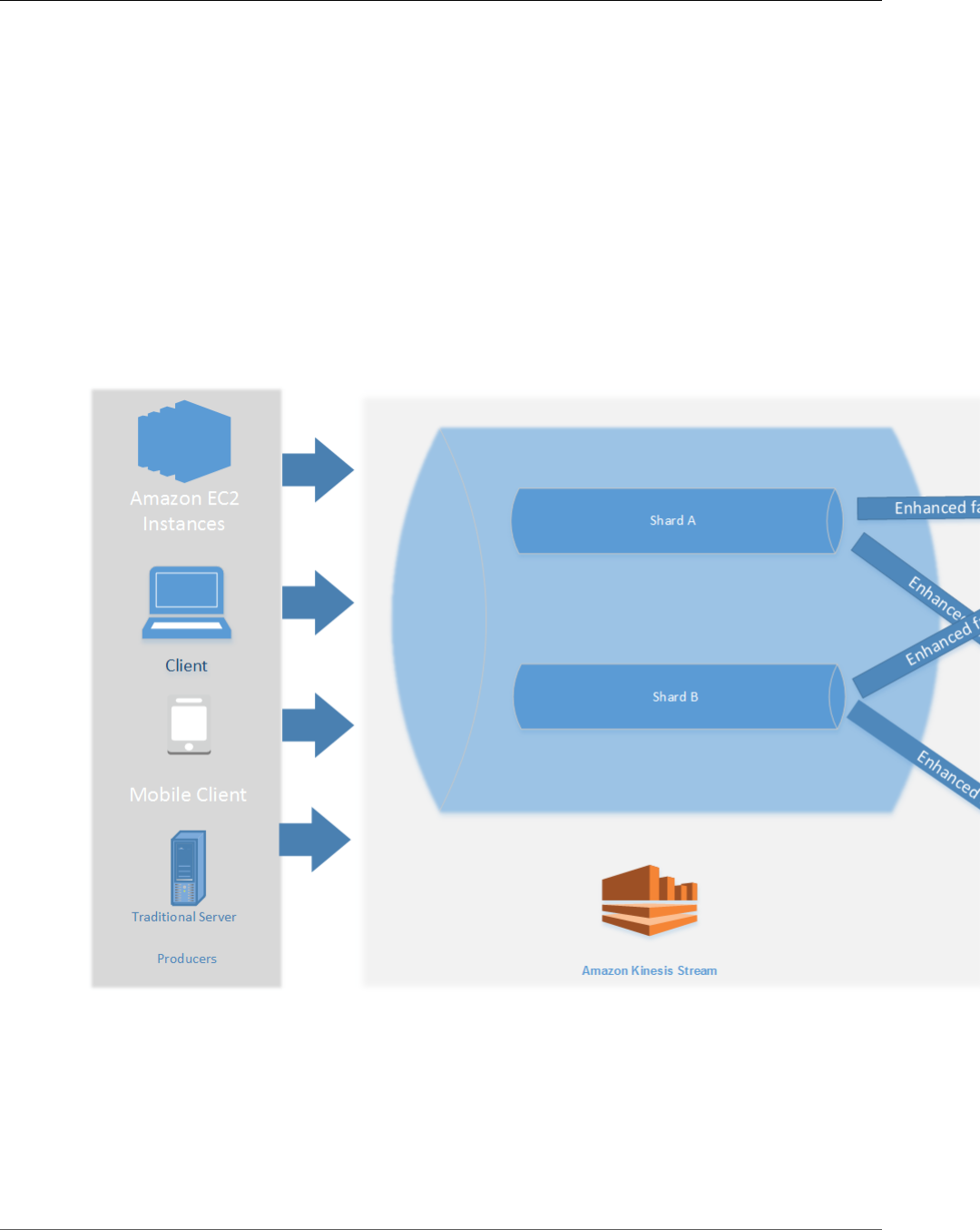

Kinesis Data Streams High-Level Architecture

The following diagram illustrates the high-level architecture of Kinesis Data Streams. The producers

continually push data to Kinesis Data Streams, and the consumers process the data in real time.

Consumers (such as a custom application running on Amazon EC2 or an Amazon Kinesis Data Firehose

2

Amazon Kinesis Data Streams Developer Guide

Terminology

delivery stream) can store their results using an AWS service such as Amazon DynamoDB, Amazon

Redshift, or Amazon S3.

Kinesis Data Streams Terminology

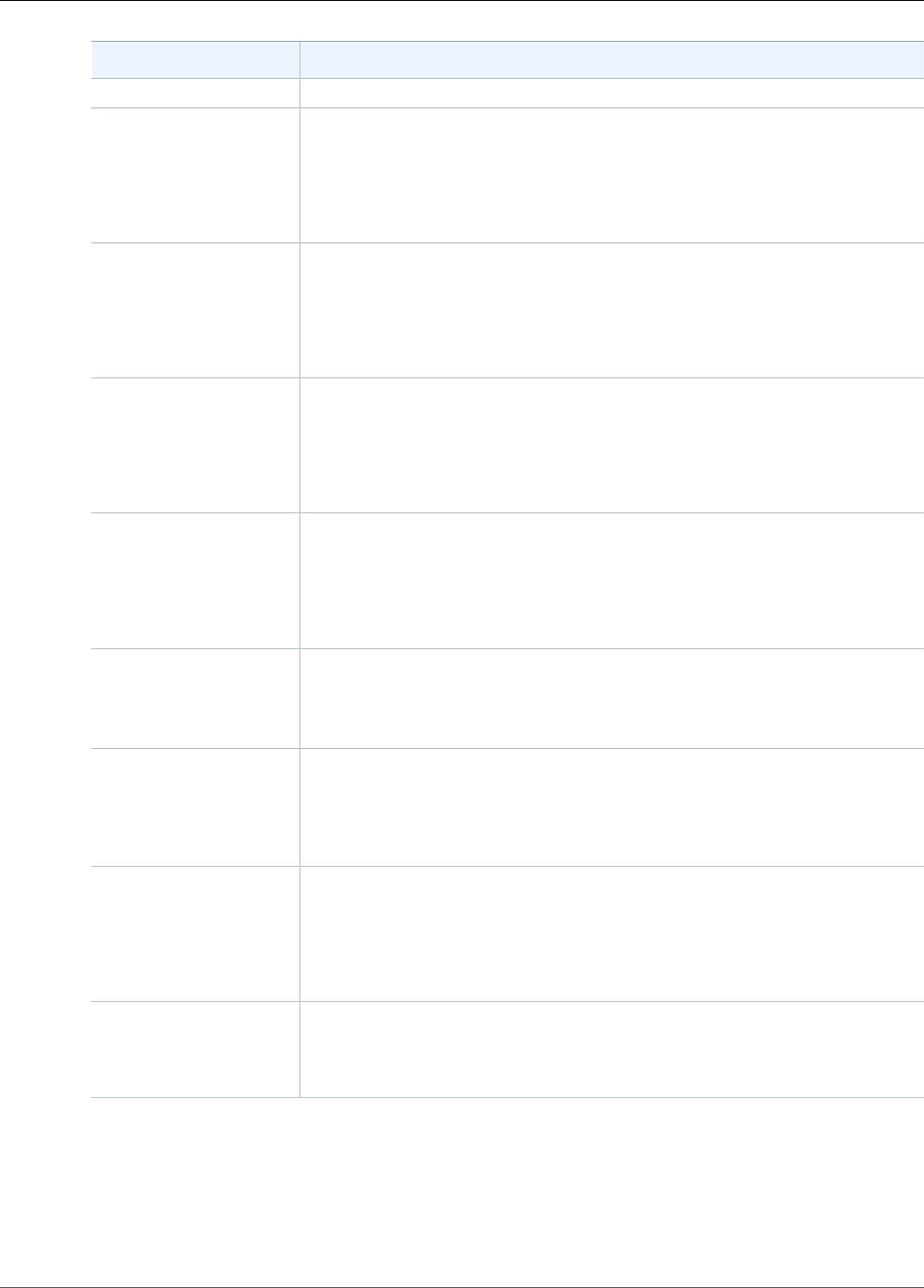

Kinesis Data Stream

A Kinesis data stream is a set of shards (p. 4). Each shard has a sequence of data records. Each data

record has a sequence number (p. 4) that is assigned by Kinesis Data Streams.

Data Record

A data record is the unit of data stored in a Kinesis data stream (p. 3). Data records are composed of a

sequence number (p. 4), a partition key (p. 4), and a data blob, which is an immutable sequence

of bytes. Kinesis Data Streams does not inspect, interpret, or change the data in the blob in any way. A

data blob can be up to 1 MB.

Retention Period

The retention period is the length of time that data records are accessible after they are added

to the stream. A stream’s retention period is set to a default of 24 hours after creation. You can

increase the retention period up to 168 hours (7 days) using the IncreaseStreamRetentionPeriod

operation, and decrease the retention period down to a minimum of 24 hours using the

DecreaseStreamRetentionPeriod operation. Additional charges apply for streams with a retention period

set to more than 24 hours. For more information, see Amazon Kinesis Data Streams Pricing.

Producer

Producers put records into Amazon Kinesis Data Streams. For example, a web server sending log data to a

stream is a producer.

Consumer

Consumers get records from Amazon Kinesis Data Streams and process them. These consumers are

known as Amazon Kinesis Data Streams Application (p. 4).

3

Amazon Kinesis Data Streams Developer Guide

Terminology

Amazon Kinesis Data Streams Application

An Amazon Kinesis Data Streams application is a consumer of a stream that commonly runs on a fleet of

EC2 instances.

There are two types of consumers that you can develop: shared fan-out consumers and enhanced fan-

out consumers. To learn about the differences between them, and to see how you can create each type

of consumer, see Reading Data from Amazon Kinesis Data Streams (p. 115).

The output of a Kinesis Data Streams application can be input for another stream, enabling you to create

complex topologies that process data in real time. An application can also send data to a variety of other

AWS services. There can be multiple applications for one stream, and each application can consume data

from the stream independently and concurrently.

Shard

A shard is a uniquely identified sequence of data records in a stream. A stream is composed of one or

more shards, each of which provides a fixed unit of capacity. Each shard can support up to 5 transactions

per second for reads, up to a maximum total data read rate of 2 MB per second and up to 1,000 records

per second for writes, up to a maximum total data write rate of 1 MB per second (including partition

keys). The data capacity of your stream is a function of the number of shards that you specify for the

stream. The total capacity of the stream is the sum of the capacities of its shards.

If your data rate increases, you can increase or decrease the number of shards allocated to your stream.

Partition Key

A partition key is used to group data by shard within a stream. Kinesis Data Streams segregates the data

records belonging to a stream into multiple shards. It uses the partition key that is associated with each

data record to determine which shard a given data record belongs to. Partition keys are Unicode strings

with a maximum length limit of 256 bytes. An MD5 hash function is used to map partition keys to 128-

bit integer values and to map associated data records to shards. When an application puts data into a

stream, it must specify a partition key.

Sequence Number

Each data record has a sequence number that is unique within its shard. Kinesis Data Streams assigns the

sequence number after you write to the stream with client.putRecords or client.putRecord.

Sequence numbers for the same partition key generally increase over time. The longer the time period

between write requests, the larger the sequence numbers become.

Note

Sequence numbers cannot be used as indexes to sets of data within the same stream. To

logically separate sets of data, use partition keys or create a separate stream for each dataset.

Kinesis Client Library

The Kinesis Client Library is compiled into your application to enable fault-tolerant consumption of

data from the stream. The Kinesis Client Library ensures that for every shard there is a record processor

running and processing that shard. The library also simplifies reading data from the stream. The Kinesis

Client Library uses an Amazon DynamoDB table to store control data. It creates one table per application

that is processing data.

There are two major versions of the Kinesis Client Library. Which one you use depends on the type

of consumer you want to create. For more information, see Reading Data from Amazon Kinesis Data

Streams (p. 115).

4

Amazon Kinesis Data Streams Developer Guide

Data Streams

Application Name

The name of an Amazon Kinesis Data Streams application identifies the application. Each of your

applications must have a unique name that is scoped to the AWS account and Region used by the

application. This name is used as a name for the control table in Amazon DynamoDB and the namespace

for Amazon CloudWatch metrics.

Server-Side Encryption

Amazon Kinesis Data Streams can automatically encrypt sensitive data as a producer enters it into a

stream. Kinesis Data Streams uses AWS KMS master keys for encryption. For more information, see Using

Server-Side Encryption (p. 80).

Note

To read from or write to an encrypted stream, producer and consumer applications must have

permission to access the master key. For information about granting permissions to producer

and consumer applications, see the section called “Permissions to Use User-Generated KMS

Master Keys” (p. 84).

Note

Using server-side encryption incurs AWS Key Management Service (AWS KMS) costs. For more

information, see AWS Key Management Service Pricing.

Creating and Updating Data Streams

Amazon Kinesis Data Streams ingests a large amount of data in real time, durably stores the data,

and makes the data available for consumption. The unit of data stored by Kinesis Data Streams is a

data record. A data stream represents a group of data records. The data records in a data stream are

distributed into shards.

A shard has a sequence of data records in a stream. When you create a stream, you specify the number

of shards for the stream. The total capacity of a stream is the sum of the capacities of its shards. You

can increase or decrease the number of shards in a stream as needed. However, you are charged on a

per-shard basis. For information about the capacities and limits of a shard, see Kinesis Data Streams

Limits (p. 8).

A producer (p. 7) puts data records into shards and a consumer (p. 8) gets data records from

shards.

Determining the Initial Size of a Kinesis Data Stream

Before you create a stream, you need to determine an initial size for the stream. After you create the

stream, you can dynamically scale your shard capacity up or down using the AWS Management Console

or the UpdateShardCount API. You can make updates while there is a Kinesis Data Streams application

consuming data from the stream.

To determine the initial size of a stream, you need the following input values:

• The average size of the data record written to the stream in kibibytes (KiB), rounded up to the nearest

1 KiB, the data size (average_data_size_in_KiB).

• The number of data records written to and read from the stream per second (records_per_second).

• The number of Kinesis Data Streams applications that consume data concurrently and independently

from the stream, that is, the consumers (number_of_consumers).

• The incoming write bandwidth in KiB (incoming_write_bandwidth_in_KiB), which is equal to the

average_data_size_in_KiB multiplied by the records_per_second.

5

Amazon Kinesis Data Streams Developer Guide

Creating a Stream

• The outgoing read bandwidth in KiB (outgoing_read_bandwidth_in_KiB), which is equal to the

incoming_write_bandwidth_in_KiB multiplied by the number_of_consumers.

You can calculate the initial number of shards (number_of_shards) that your stream needs by using

the input values in the following formula:

number_of_shards = max(incoming_write_bandwidth_in_KiB/1024,

outgoing_read_bandwidth_in_KiB/2048)

Creating a Stream

You can create a stream using the Kinesis Data Streams console, the Kinesis Data Streams API, or the

AWS Command Line Interface (AWS CLI).

To create a data stream using the console

1. Sign in to the AWS Management Console and open the Kinesis console at https://

console.aws.amazon.com/kinesis.

2. In the navigation bar, expand the Region selector and choose a Region.

3. Choose Create data stream.

4. On the Create Kinesis stream page, enter a name for your stream and the number of shards you

need, and then click Create Kinesis stream.

On the Kinesis streams page, your stream's Status is Creating while the stream is being created.

When the stream is ready to use, the Status changes to Active.

5. Choose the name of your stream. The Stream Details page displays a summary of your stream

configuration, along with monitoring information.

To create a stream using the Kinesis Data Streams API

• For information about creating a stream using the Kinesis Data Streams API, see Creating a

Stream (p. 38).

To create a stream using the AWS CLI

• For information about creating a stream using the AWS CLI, see the create-stream command.

Updating a Stream

You can update the details of a stream using the Kinesis Data Streams console, the Kinesis Data Streams

API, or the AWS CLI.

Note

You can enable server-side encryption for existing streams, or for streams that you have recently

created.

To update a data stream using the console

1. Open the Amazon Kinesis console at https://console.aws.amazon.com/kinesis/.

2. In the navigation bar, expand the Region selector and choose a Region.

3. Choose the name of your stream in the list. The Stream Details page displays a summary of your

stream configuration and monitoring information.

6

Amazon Kinesis Data Streams Developer Guide

Producers

4. To edit the number of shards, choose Edit in the Shards section, and then enter a new shard count.

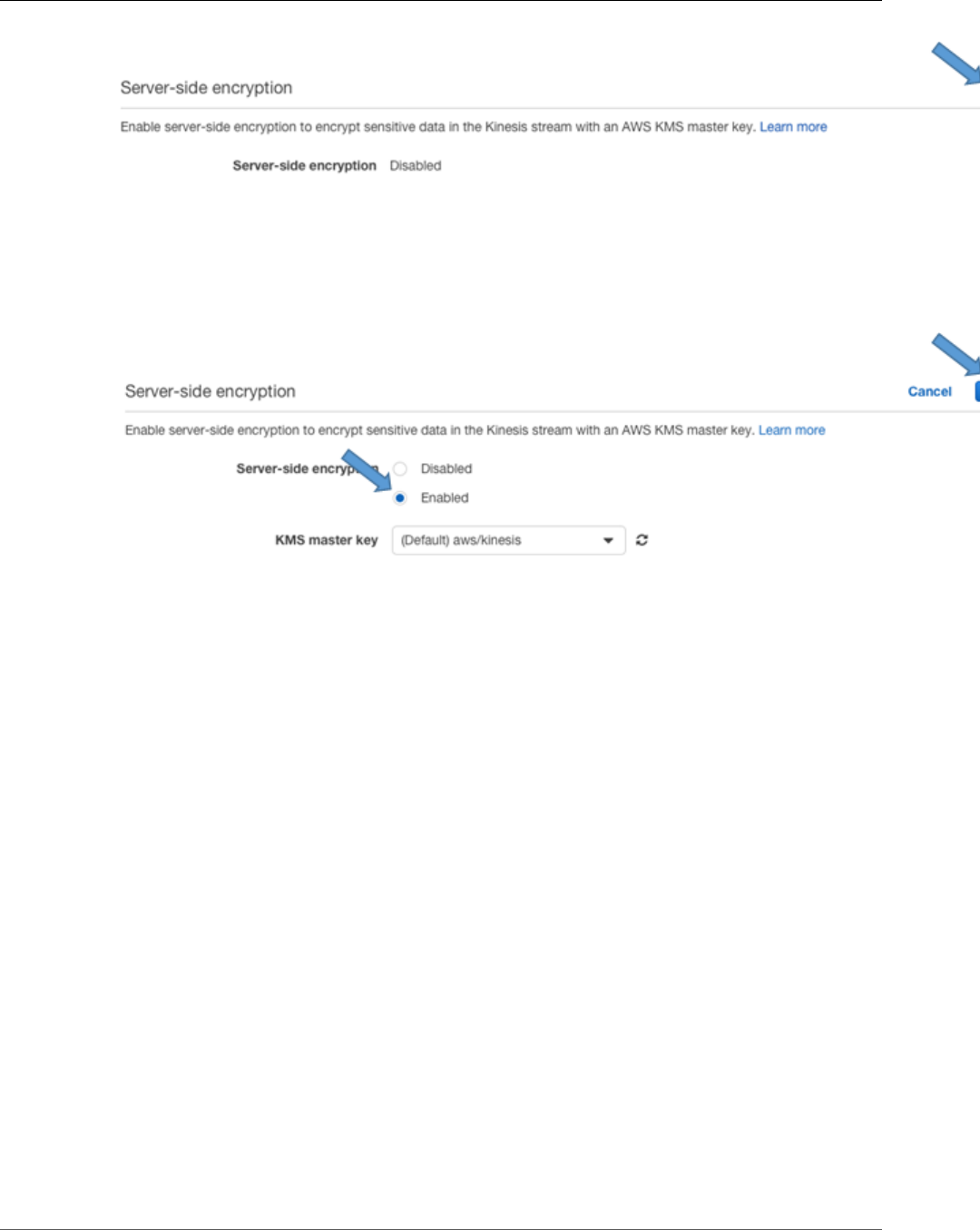

5. To enable server-side encryption of data records, choose Edit in the Server-side encryption section.

Choose a KMS key to use as the master key for encryption, or use the default master key, aws/

kinesis, managed by Kinesis. If you enable encryption for a stream and use your own AWS KMS

master key, ensure that your producer and consumer applications have access to the AWS KMS

master key that you used. To assign permissions to an application to access a user-generated AWS

KMS key, see the section called “Permissions to Use User-Generated KMS Master Keys” (p. 84).

6. To edit the data retention period, choose Edit in the Data retention period section, and then enter a

new data retention period.

7. If you have enabled custom metrics on your account, choose Edit in the Shard level metrics section,

and then specify metrics for your stream. For more information, see the section called “Monitoring

the Service with CloudWatch” (p. 51).

Updating a Stream Using the API

To update stream details using the API, see the following methods:

•AddTagsToStream

•DecreaseStreamRetentionPeriod

•DisableEnhancedMonitoring

•EnableEnhancedMonitoring

•IncreaseStreamRetentionPeriod

•RemoveTagsFromStream

•StartStreamEncryption

•StopStreamEncryption

•UpdateShardCount

Updating a Stream Using the AWS CLI

For information about updating a stream using the AWS CLI, see the Kinesis CLI reference.

Kinesis Data Streams Producers

A producer puts data records into Amazon Kinesis data streams. For example, a web server sending log

data to a Kinesis data stream is a producer. A consumer (p. 8) processes the data records from a

stream.

Important

Kinesis Data Streams supports changes to the data record retention period of your data stream.

For more information, see Changing the Data Retention Period (p. 47).

To put data into the stream, you must specify the name of the stream, a partition key, and the data blob

to be added to the stream. The partition key is used to determine which shard in the stream the data

record is added to.

All the data in the shard is sent to the same worker that is processing the shard. Which partition key you

use depends on your application logic. The number of partition keys should typically be much greater

than the number of shards. This is because the partition key is used to determine how to map a data

record to a particular shard. If you have enough partition keys, the data can be evenly distributed across

the shards in a stream.

7

Amazon Kinesis Data Streams Developer Guide

Consumers

For more information, see Adding Data to a Stream (p. 98) (includes Java example code), the

PutRecords and PutRecord operations in the Kinesis Data Streams API, or the put-record command.

Kinesis Data Streams Consumers

A consumer, known as an Amazon Kinesis Data Streams application, is an application that you build to

read and process data records from Kinesis data streams.

If you want to send stream records directly to services such as Amazon Simple Storage Service (Amazon

S3), Amazon Redshift, Amazon Elasticsearch Service (Amazon ES), or Splunk, you can use a Kinesis

Data Firehose delivery stream instead of creating a consumer application. For more information, see

Creating an Amazon Kinesis Firehose Delivery Stream in the Kinesis Data Firehose Developer Guide.

However, if you need to process data records in a custom way, see Reading Data from Amazon Kinesis

Data Streams (p. 115) for guidance on how to build a consumer.

When you build a consumer, you can deploy it to an Amazon EC2 instance by adding to one of your

Amazon Machine Images (AMIs). You can scale the consumer by running it on multiple Amazon EC2

instances under an Auto Scaling group. Using an Auto Scaling group helps automatically start new

instances if there is an EC2 instance failure. It can also elastically scale the number of instances as the

load on the application changes over time. Auto Scaling groups ensure that a certain number of EC2

instances are always running. To trigger scaling events in the Auto Scaling group, you can specify metrics

such as CPU and memory utilization to scale up or down the number of EC2 instances processing data

from the stream. For more information, see the Amazon EC2 Auto Scaling User Guide.

Kinesis Data Streams Limits

Amazon Kinesis Data Streams has the following stream and shard limits.

• There is no upper limit on the number of shards you can have in a stream or account. It is common for

a workload to have thousands of shards in a single stream.

• There is no upper limit on the number of streams you can have in an account.

• A single shard can ingest up to 1 MiB of data per second (including partition keys) or 1,000 records per

second for writes. Similarly, if you scale your stream to 5,000 shards, the stream can ingest up to 5 GiB

per second or 5 million records per second. If you need more ingest capacity, you can easily scale up

the number of shards in the stream using the AWS Management Console or the UpdateShardCount

API.

• The default shard limit is 500 shards for the following AWS Regions: US East (N. Virginia), US West

(Oregon), and EU (Ireland). For all other Regions, the default shard limit is 200 shards.

• The maximum size of the data payload of a record before base64-encoding is up to 1 MiB.

•GetRecords can retrieve up to 10 MiB of data per call from a single shard, and up to 10,000 records per

call. Each call to GetRecords is counted as one read transaction.

• Each shard can support up to five read transactions per second. Each read transaction can provide up

to 10,000 records with an upper limit of 10 MiB per transaction.

• Each shard can support up to a maximum total data read rate of 2 MiB per second via GetRecords.

If a call to GetRecords returns 10 MiB, subsequent calls made within the next 5 seconds throw an

exception.

API limits

Like most AWS APIs, Kinesis Data Streams API operations are rate-limited. For information about API call

rate limits, see the Amazon Kinesis API Reference. If you encounter API throttling, we encourage you to

request a limit increase.

8

Amazon Kinesis Data Streams Developer Guide

Setting Up

Getting Started Using Amazon

Kinesis Data Streams

This documentation helps you get started using Amazon Kinesis Data Streams. If you are new to Kinesis

Data Streams, start by becoming familiar with the concepts and terminology presented in What Is

Amazon Kinesis Data Streams? (p. 1).

Topics

•Setting Up for Amazon Kinesis Data Streams (p. 10)

•Tutorial: Visualizing Web Traffic Using Amazon Kinesis Data Streams (p. 11)

•Tutorial: Getting Started With Amazon Kinesis Data Streams Using AWS CLI (p. 17)

•Tutorial: Analyzing Real-Time Stock Data Using Kinesis Data Streams (p. 23)

Setting Up for Amazon Kinesis Data Streams

Before you use Amazon Kinesis Data Streams for the first time, complete the following tasks.

Tasks

•Sign Up for AWS (p. 10)

•Download Libraries and Tools (p. 10)

•Configure Your Development Environment (p. 11)

Sign Up for AWS

When you sign up for Amazon Web Services (AWS), your AWS account is automatically signed up for all

services in AWS, including Kinesis Data Streams. You are charged only for the services that you use.

If you have an AWS account already, skip to the next task. If you don't have an AWS account, use the

following procedure to create one.

To sign up for an AWS account

1. Open https://aws.amazon.com/, and then choose Create an AWS Account.

Note

If you previously signed in to the AWS Management Console using AWS account root user

credentials, choose Sign in to a different account. If you previously signed in to the console

using IAM credentials, choose Sign-in using root account credentials. Then choose Create

a new AWS account.

2. Follow the online instructions.

Part of the sign-up procedure involves receiving a phone call and entering a verification code using

the phone keypad.

Download Libraries and Tools

The following libraries and tools will help you work with Kinesis Data Streams:

10

Amazon Kinesis Data Streams Developer Guide

Configure Your Development Environment

• The Amazon Kinesis API Reference is the basic set of operations that Kinesis Data Streams supports.

For more information about performing basic operations using Java code, see the following:

•Developing Producers Using the Amazon Kinesis Data Streams API with the AWS SDK for

Java (p. 98)

•Developing Consumers Using the Kinesis Data Streams API with the AWS SDK for Java (p. 134)

•Creating and Managing Streams (p. 38)

• The AWS SDKs for Go, Java, JavaScript, .NET, Node.js, PHP, Python, and Ruby include Kinesis Data

Streams support and samples. If your version of the AWS SDK for Java does not include samples for

Kinesis Data Streams, you can also download them from GitHub.

• The Kinesis Client Library (KCL) provides an easy-to-use programming model for processing data. The

KCL can help you get started quickly with Kinesis Data Streams in Java, Node.js, .NET, Python, and

Ruby. For more information see Reading Data from Streams (p. 115).

• The AWS Command Line Interface supports Kinesis Data Streams. The AWS CLI enables you to control

multiple AWS services from the command line and automate them through scripts.

Configure Your Development Environment

To use the KCL, ensure that your Java development environment meets the following requirements:

• Java 1.7 (Java SE 7 JDK) or later. You can download the latest Java software from Java SE Downloads

on the Oracle website.

• Apache Commons package (Code, HTTP Client, and Logging)

• Jackson JSON processor

Note that the AWS SDK for Java includes Apache Commons and Jackson in the third-party folder.

However, the SDK for Java works with Java 1.6, while the Kinesis Client Library requires Java 1.7.

Tutorial: Visualizing Web Traffic Using Amazon

Kinesis Data Streams

This tutorial helps you get started using Amazon Kinesis Data Streams by providing an introduction

to key Kinesis Data Streams constructs; specifically streams (p. 5), data producers (p. 7), and data

consumers (p. 8). The tutorial uses a sample application based upon a common use case of real-time data

analytics, as introduced in What Is Amazon Kinesis Data Streams? (p. 1).

The web application for this sample uses a simple JavaScript application to poll the DynamoDB table

used to store the results of the Top-N analysis over a slide window. The application takes this data and

creates a visualization of the results.

Kinesis Data Streams Data Visualization Sample

Application

The data visualization sample application for this tutorial demonstrates how to use Kinesis Data Streams

for real-time data ingestion and analysis. The sample application creates a data producer that puts

simulated visitor counts from various URLs into a Kinesis data stream. The stream durably stores these

data records in the order they are received. The data consumer gets these records from the stream, and

then calculates how many visitors originated from a particular URL. Finally, a simple web application

polls the results in real time to provide a visualization of the calculations.

11

Amazon Kinesis Data Streams Developer Guide

Prerequisites

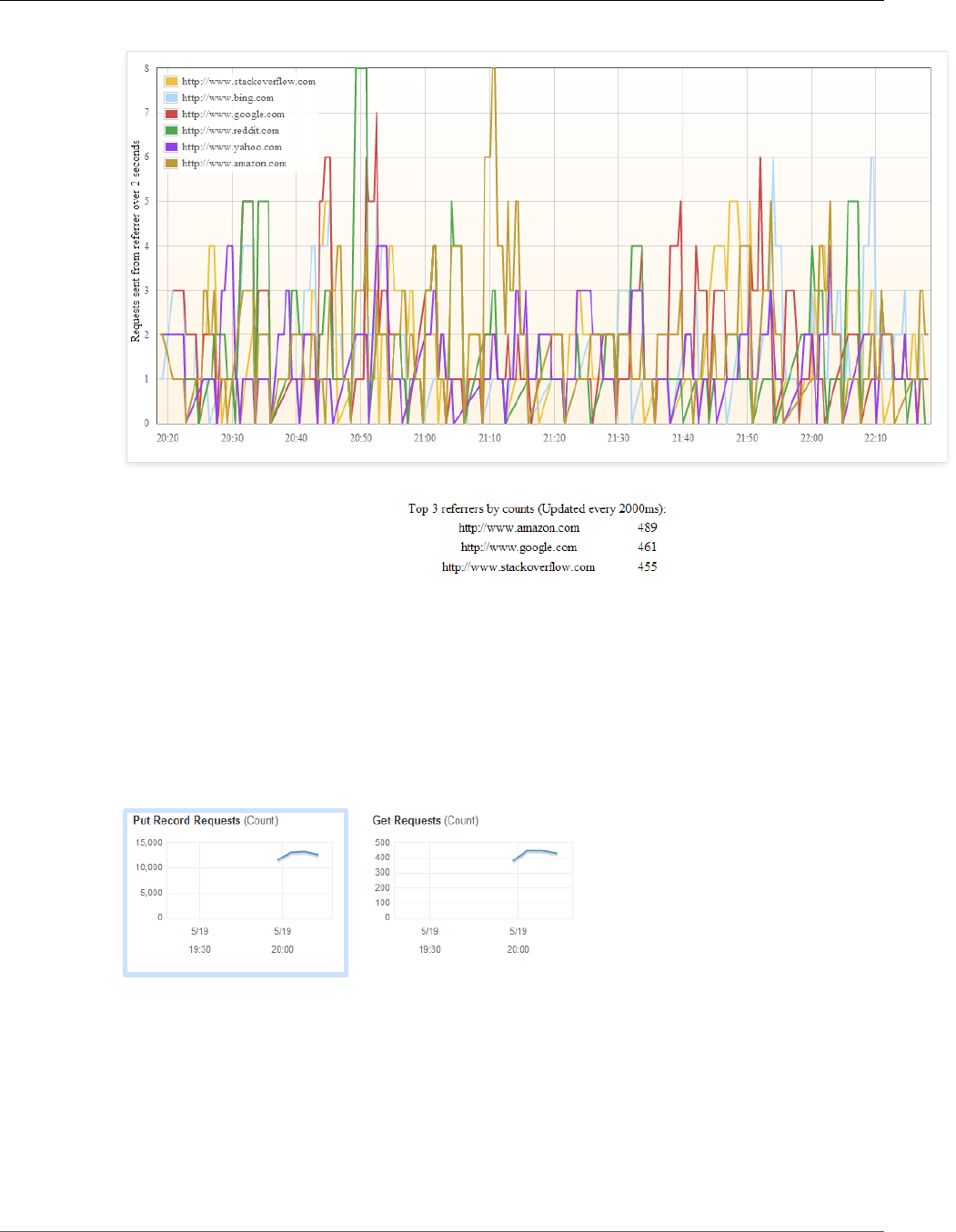

The sample application demonstrates the common stream processing use-case of performing a sliding

window analysis over a 10-second period. The data displayed in the above visualization reflects the

results of the sliding window analysis of the stream as a continuously updated graph. In addition, the

data consumer performs Top-K analysis over the data stream to compute the top three referrers by

count, which is displayed in the table immediately below the graph and updated every two seconds.

To get you started quickly, the sample application uses AWS CloudFormation. AWS CloudFormation

allows you to create templates to describe the AWS resources and any associated dependencies or

runtime parameters required to run your application. The sample application uses a template to create

all the necessary resources quickly, including producer and consumer applications running on an Amazon

EC2 instance and a table in Amazon DynamoDB to store the aggregate record counts.

Note

After the sample application starts, it incurs nominal charges for Kinesis Data Streams usage.

Where possible, the sample application uses resources that are eligible for the AWS Free Tier.

When you are finished with this tutorial, delete your AWS resources to stop incurring charges.

For more information, see Step 3: Delete Sample Application (p. 16).

Prerequisites

This tutorial helps you set up, run, and view the results of the Kinesis Data Streams data visualization

sample application. To get started with the sample application, you first need to do the following:

• Set up a computer with a working Internet connection.

• Sign up for an AWS account.

• Additionally, read through the introductory sections to gain a high-level understanding of

streams (p. 5), data producers (p. 7), and data consumers (p. 8).

Step 1: Start the Sample Application

Start the sample application using a AWS CloudFormation template provided by AWS. The sample

application has a stream writer that randomly generates records and sends them to an Kinesis data

stream, a data consumer that counts the number of HTTPS requests to a resource, and a web application

that displays the outputs of the stream processing data as a continuously updated graph.

To start the application

1. Open the AWS CloudFormation template for this tutorial.

2. On the Select Template page, the URL for the template is provided. Choose Next.

3. On the Specify Details page, note that the default instance type is t2.micro. However, T2

instances require a VPC. If your AWS account does not have a default VPC in your region, you must

change InstanceType another instance type, such as m3.medium. Choose Next.

4. On the Options page, you can optionally type a tag key and tag value. This tag will be added to the

resources created by the template, such as the EC2 instance. Choose Next.

5. On the Review page, select I acknowledge that this template might cause AWS CloudFormation

to create IAM resources, and then choose Create.

Initially, you should see a stack named KinesisDataVisSample with the status CREATE_IN_PROGRESS.

The stack can take several minutes to create. When the status is CREATE_COMPLETE, continue to the

next step. If the status does not update, refresh the page.

12

Amazon Kinesis Data Streams Developer Guide

Step 2: View the Components of the Sample Application

Step 2: View the Components of the Sample

Application

Components

•Kinesis Data Stream (p. 13)

•Data Producer (p. 14)

•Data Consumer (p. 15)

Kinesis Data Stream

A stream (p. 5) has the ability to ingest data in real-time from a large number of producers, durably store

the data, and provide the data to multiple consumers. A stream represents an ordered sequence of data

records. When you create a stream, you must specify a stream name and a shard count. A stream consists

of one or more shards; each shard is a group of data records.

AWS CloudFormation automatically creates the stream for the sample application. This section of the

AWS CloudFormation template shows the parameters used in the CreateStream operation.

To view the stack details

1. Select the KinesisDataVisSample stack.

2. On the Outputs tab, choose the link in URL. The form of the URL should be similar to the following:

http://ec2-xx-xx-xx-xx.compute-1.amazonaws.com.

3. It takes about 10 minutes for the application stack to be created and for meaningful data to show

up in the data analysis graph. The real-time data analysis graph appears on a separate page, titled

Kinesis Data Streams Data Visualization Sample. It displays the number of requests sent by the

referring URL over a 10 second span, and the chart is updated every 1 second. The span of the graph

is the last 2 minutes.

13

Amazon Kinesis Data Streams Developer Guide

Step 2: View the Components of the Sample Application

To view the stream details

1. Open the Kinesis console at https://console.aws.amazon.com/kinesis.

2. Select the stream whose name has the following form: KinesisDataVisSampleApp-

KinesisStream-[randomString].

3. Choose the name of the stream to view the stream details.

4. The graphs display the activity of the data producer putting records into the stream and the data

consumer getting data from the stream.

Data Producer

A data producer (p. 7) submits data records to the Kinesis data stream. To put data into the stream,

producers call the PutRecord operation on a stream.

Each PutRecord call requires the stream name, partition key, and the data record that the producer

is adding to the stream. The stream name determines the stream in which the record will reside. The

partition key is used to determine the shard in the stream that the data record will be added to.

14

Amazon Kinesis Data Streams Developer Guide

Step 2: View the Components of the Sample Application

Which partition key you use depends on your application logic. The number of partition keys should

be much greater than the number of shards. in most cases. A high number of partition keys relative to

shards allows the stream to distribute data records evenly across the shards in your stream.

The data producer uses six popular URLs as a partition key for each record put into the two-shard stream.

These URLs represent simulated page visits. Rows 99-132 of the HttpReferrerKinesisPutter code send

the data to Kinesis Data Streams. The three required parameters are set before calling PutRecord. The

partition key is set using pair.getResource, which randomly selects one of the six URLs created in

rows 85-92 of the HttpReferrerStreamWriter code.

A data producer can be anything that puts data to Kinesis Data Streams, such as an EC2 instance, client

browser, or mobile device. The sample application uses an EC2 instance for its data producer as well

as its data consumer; whereas, most real-life scenarios would have separate EC2 instances for each

component of the application. You can view EC2 instance data from the sample application by following

the instructions below.

To view the instance data in the console

1. Open the Amazon EC2 console at https://console.aws.amazon.com/ec2/.

2. On the navigation pane, choose Instances.

3. Select the instance created for the sample application. If you aren't sure which instance this is, it has

a security group with a name that starts with KinesisDataVisSample.

4. On the Monitoring tab, you'll see the resource usage of the sample application's data producer and

data consumer.

Data Consumer

Data consumers (p. 8) retrieve and process data records from shards in a Kinesis data stream. Each

consumer reads data from a particular shard. Consumers retrieve data from a shard using the

GetShardIterator and GetRecords operations.

A shard iterator represents the position of the stream and shard from which the consumer will read. A

consumer gets a shard iterator when it starts reading from a stream or changes the position from which

it reads records from a stream. To get a shard iterator, you must provide a stream name, shard ID, and

shard iterator type. The shard iterator type allows the consumer to specify where in the stream it would

like to start reading from, such as from the start of the stream where the data is arriving in real-time. The

stream returns the records in a batch, whose size you can control using the optional limit parameter.

The data consumer creates a table in DynamoDB to maintain state information (such as checkpoints and

worker-shard mapping) for the application. Each application has its own DynamoDB table.

The data consumer counts visitor requests from each particular URL over the last two seconds. This type

of real-time application employs Top-N analysis over a sliding window. In this case, the Top-N are the

top three pages by visitor requests and the sliding window is two seconds. This is a common processing

pattern that is demonstrative of real-world data analyses using Kinesis Data Streams. The results of this

calculation are persisted to a DynamoDB table.

To view the Amazon DynamoDB tables

1. Open the DynamoDB console at https://console.aws.amazon.com/dynamodb/.

2. On the navigation pane, select Tables.

3. There are two tables created by the sample application:

• KinesisDataVisSampleApp-KCLDynamoDBTable-[randomString]—Manages state information.

• KinesisDataVisSampleApp-CountsDynamoDBTable-[randomString]—Persists the results of the

Top-N analysis over a sliding window.

15

Amazon Kinesis Data Streams Developer Guide

Step 3: Delete Sample Application

4. Select the KinesisDataVisSampleApp-KCLDynamoDBTable-[randomString] table. There are two

entries in the table, indicating the particular shard (leaseKey), position in the stream (checkpoint),

and the application reading the data (leaseOwner).

5. Select the KinesisDataVisSampleApp-CountsDynamoDBTable-[randomString] table. You can see the

aggregated visitor counts (referrerCounts) that the data consumer calculates as part of the sliding

window analysis.

Kinesis Client Library (KCL)

Consumer applications can use the Kinesis Client Library (KCL) to simplify parallel processing of the

stream. The KCL takes care of many of the complex tasks associated with distributed computing, such

as load-balancing across multiple instances, responding to instance failures, checkpointing processed

records, and reacting to resharding. The KCL enables you to focus on writing record processing logic.

The data consumer provides the KCL with position of the stream that it wants to read from, in this

case specifying the latest possible data from the beginning of the stream. The library uses this to call

GetShardIterator on behalf of the consumer. The consumer component also provides the client

library with what to do with the records that are processed using an important KCL interface called

IRecordProcessor. The KCL calls GetRecords on behalf of the consumer and then processes those

records as specified by IRecordProcessor.

•Rows 92-98 of the HttpReferrerCounterApplication sample code configure the KCL. This sets up the

library with its initial configuration, such as the setting the position of the stream in which to read

data.

•Rows 104-108 of the HttpReferrerCounterApplication sample code inform the KCL of what logic to use

when processing records using an important client library component, IRecordProcessor.

•Rows 186-203 of the CountingRecordProcessor sample code include the counting logic for the Top-N

analysis using IRecordProcessor.

Step 3: Delete Sample Application

The sample application creates two shards, which incur shard usage charges while the application runs.

To ensure that your AWS account does not continue to be billed, delete your AWS CloudFormation stack

after you finish with the sample application.

To delete application resources

1. Open the AWS CloudFormation console at https://console.aws.amazon.com/cloudformation.

2. Select the stack.

3. Choose Actions, Delete Stack

4. When prompted for confirmation, choose Yes, Delete.

The status changes to DELETE_IN_PROGRESS while AWS CloudFormation cleans up the resources

associated with the sample application. When AWS CloudFormation is finished cleaning up the resources,

it removes the stack from the list.

Step 4: Next Steps

• You can explore the source code for the Data Visualization Sample Application on GitHub.

• You can find more advanced material about using the Kinesis Data Streams API in the Developing

Producers Using the Amazon Kinesis Data Streams API with the AWS SDK for Java (p. 98),

Developing Consumers Using the Kinesis Data Streams API with the AWS SDK for Java (p. 134), and

Creating and Managing Streams (p. 38).

16

Amazon Kinesis Data Streams Developer Guide

Tutorial: Getting Started Using the CLI

• You can find sample application in the AWS SDK for Java that uses the SDK to put and get data from

Kinesis Data Streams.

Tutorial: Getting Started With Amazon Kinesis

Data Streams Using AWS CLI

This tutorial shows you how to perform basic Amazon Kinesis Data Streams operations using the AWS

Command Line Interface. You will learn fundamental Kinesis Data Streams data flow principles and the

steps necessary to put and get data from an Kinesis data stream.

For CLI access, you need an access key ID and secret access key. Use IAM user access keys instead of AWS

account root user access keys. IAM lets you securely control access to AWS services and resources in your

AWS account. For more information about creating access keys, see Understanding and Getting Your

Security Credentials in the AWS General Reference.

You can find detailed step-by-step IAM and security key set up instructions at Create an IAM User.

In this tutorial, the specific commands discussed will be given verbatim, except where specific values will

necessarily be different for each run. Also, the examples are using the US West (Oregon) region, but this

tutorial will work on any of the regions that support Kinesis Data Streams.

Topics

•Install and Configure the AWS CLI (p. 17)

•Perform Basic Stream Operations (p. 19)

Install and Configure the AWS CLI

Install AWS CLI

Use the following process to install the AWS CLI for Windows and for Linux, OS X, and Unix operating

systems.

Windows

1. Download the appropriate MSI installer from the Windows section of the full installation

instructions in the AWS Command Line Interface User Guide.

2. Run the downloaded MSI installer.

3. Follow the instructions that appear.

Linux, macOS, or Unix

These steps require Python 2.6.5 or higher. If you have any problems, see the full installation instructions

in the AWS Command Line Interface User Guide.

1. Download and run the installation script from the pip website:

curl "https://bootstrap.pypa.io/get-pip.py" -o "get-pip.py"

sudo python get-pip.py

2. Install the AWS CLI Using Pip.

17

Amazon Kinesis Data Streams Developer Guide

Install and Configure the AWS CLI

sudo pip install awscli

Use the following command to list available options and services:

aws help

You will be using the Kinesis Data Streams service, so you can review the AWS CLI subcommands related

to Kinesis Data Streams using the following command:

aws kinesis help

This command results in output that includes the available Kinesis Data Streams commands:

AVAILABLE COMMANDS

o add-tags-to-stream

o create-stream

o delete-stream

o describe-stream

o get-records

o get-shard-iterator

o help

o list-streams

o list-tags-for-stream

o merge-shards

o put-record

o put-records

o remove-tags-from-stream

o split-shard

o wait

This command list corresponds to the Kinesis Data Streams API documented in the Amazon Kinesis

Service API Reference. For example, the create-stream command corresponds to the CreateStream

API action.

The AWS CLI is now successfully installed, but not configured. This is shown in the next section.

Configure AWS CLI

For general use, the aws configure command is the fastest way to set up your AWS CLI installation.

This is a one-time setup if your preferences don't change because the AWS CLI remembers your settings

between sessions.

18

Amazon Kinesis Data Streams Developer Guide

Perform Basic Stream Operations

aws configure

AWS Access Key ID [None]: AKIAIOSFODNN7EXAMPLE

AWS Secret Access Key [None]: wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY

Default region name [None]: us-west-2

Default output format [None]: json

The AWS CLI will prompt you for four pieces of information. The AWS access key ID and the AWS secret

access key are your account credentials. If you don't have keys, see Sign Up for Amazon Web Services.

The default region is the name of the region you want to make calls against by default. This is usually the

region closest to you, but it can be any region.

Note

You must specify an AWS region when using the AWS CLI. For a list of services and available

regions, see Regions and Endpoints.

The default output format can be either JSON, text, or table. If you don't specify an output format, JSON

will be used.

For more information about the files that aws configure creates, additional settings, and named

profiles, see Configuring the AWS Command Line Interface in the AWS Command Line Interface User

Guide.

Perform Basic Stream Operations

This section describes basic use of an Kinesis data stream from the command line using the AWS CLI.

Be sure you are familiar with the concepts discussed in Kinesis Data Streams Key Concepts (p. 2) and

Tutorial: Visualizing Web Traffic Using Amazon Kinesis Data Streams (p. 11).

Note

After you create a stream, your account incurs nominal charges for Kinesis Data Streams usage

because Kinesis Data Streams is not eligible for the AWS free tier. When you are finished with

this tutorial, delete your AWS resources to stop incurring charges. For more information, see

Step 4: Clean Up (p. 23).

Topics

•Step 1: Create a Stream (p. 19)

•Step 2: Put a Record (p. 20)

•Step 3: Get the Record (p. 21)

•Step 4: Clean Up (p. 23)

Step 1: Create a Stream

Your first step is to create a stream and verify that it was successfully created. Use the following

command to create a stream named "Foo":

aws kinesis create-stream --stream-name Foo --shard-count 1

The parameter --shard-count is required, and for this part of the tutorial you are using one shard in

your stream. Next, issue the following command to check on the stream's creation progress:

aws kinesis describe-stream --stream-name Foo

You should get output that is similar to the following example:

{

19

Amazon Kinesis Data Streams Developer Guide

Perform Basic Stream Operations

"StreamDescription": {

"StreamStatus": "CREATING",

"StreamName": "Foo",

"StreamARN": "arn:aws:kinesis:us-west-2:account-id:stream/Foo",

"Shards": []

}

}

In this example, the stream has a status CREATING, which means it is not quite ready to use. Check again

in a few moments, and you should see output similar to the following example:

{

"StreamDescription": {

"StreamStatus": "ACTIVE",

"StreamName": "Foo",

"StreamARN": "arn:aws:kinesis:us-west-2:account-id:stream/Foo",

"Shards": [

{

"ShardId": "shardId-000000000000",

"HashKeyRange": {

"EndingHashKey": "170141183460469231731687303715884105727",

"StartingHashKey": "0"

},

"SequenceNumberRange": {

"StartingSequenceNumber":

"49546986683135544286507457935754639466300920667981217794"

}

}

]

}

}

There is information in this output that you don't need to be concerned about for this tutorial. The main

thing for now is "StreamStatus": "ACTIVE", which tells you that the stream is ready to be used, and

the information on the single shard that you requested. You can also verify the existence of your new

stream by using the list-streams command, as shown here:

aws kinesis list-streams

Output:

{

"StreamNames": [

"Foo"

]

}

Step 2: Put a Record

Now that you have an active stream, you are ready to put some data. For this tutorial, you will use the

simplest possible command, put-record, which puts a single data record containing the text "testdata"

into the stream:

aws kinesis put-record --stream-name Foo --partition-key 123 --data testdata

This command, if successful, will result in output similar to the following example:

{

"ShardId": "shardId-000000000000",

20

Amazon Kinesis Data Streams Developer Guide

Perform Basic Stream Operations

"SequenceNumber": "49546986683135544286507457936321625675700192471156785154"

}

Congratulations, you just added data to a stream! Next you will see how to get data out of the stream.

Step 3: Get the Record

Before you can get data from the stream you need to obtain the shard iterator for the shard you are

interested in. A shard iterator represents the position of the stream and shard from which the consumer

(get-record command in this case) will read. You'll use the get-shard-iterator command, as

follows:

aws kinesis get-shard-iterator --shard-id shardId-000000000000 --shard-iterator-type

TRIM_HORIZON --stream-name Foo

Recall that the aws kinesis commands have a Kinesis Data Streams API behind them, so if you are

curious about any of the parameters shown, you can read about them in the GetShardIterator

API reference topic. Successful execution will result in output similar to the following example (scroll

horizontally to see the entire output):

{

"ShardIterator": "AAAAAAAAAAHSywljv0zEgPX4NyKdZ5wryMzP9yALs8NeKbUjp1IxtZs1Sp

+KEd9I6AJ9ZG4lNR1EMi+9Md/nHvtLyxpfhEzYvkTZ4D9DQVz/mBYWRO6OTZRKnW9gd+efGN2aHFdkH1rJl4BL9Wyrk

+ghYG22D2T1Da2EyNSH1+LAbK33gQweTJADBdyMwlo5r6PqcP2dzhg="

}

The long string of seemingly random characters is the shard iterator (yours will be different). You will

need to copy/paste the shard iterator into the get command, shown next. Shard iterators have a valid

lifetime of 300 seconds, which should be enough time for you to copy/paste the shard iterator into the

next command. Notice you will need to remove any newlines from your shard iterator before pasting to

the next command. If you get an error message that the shard iterator is no longer valid, simply execute

the get-shard-iterator command again.

The get-records command gets data from the stream, and it resolves to a call to GetRecords in the

Kinesis Data Streams API. The shard iterator specifies the position in the shard from which you want to

start reading data records sequentially. If there are no records available in the portion of the shard that

the iterator points to, GetRecords returns an empty list. Note that it might take multiple calls to get to

a portion of the shard that contains records.

In the following example of the get-records command (scroll horizontally to see the entire command):

aws kinesis get-records --shard-iterator

AAAAAAAAAAHSywljv0zEgPX4NyKdZ5wryMzP9yALs8NeKbUjp1IxtZs1Sp+KEd9I6AJ9ZG4lNR1EMi

+9Md/nHvtLyxpfhEzYvkTZ4D9DQVz/mBYWRO6OTZRKnW9gd+efGN2aHFdkH1rJl4BL9Wyrk

+ghYG22D2T1Da2EyNSH1+LAbK33gQweTJADBdyMwlo5r6PqcP2dzhg=

If you are running this tutorial from a Unix-type command processor such as bash, you can automate the

acquisition of the shard iterator using a nested command, like this (scroll horizontally to see the entire

command):

SHARD_ITERATOR=$(aws kinesis get-shard-iterator --shard-id shardId-000000000000 --shard-

iterator-type TRIM_HORIZON --stream-name Foo --query 'ShardIterator')

aws kinesis get-records --shard-iterator $SHARD_ITERATOR

If you are running this tutorial from a system that supports PowerShell, you can automate acquisition of