[MSP] Developer's Guide To Microsoft Enterprise Library [C# Edition]

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 256 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents

- Welcome to the Library

- Much ADO about Data Access

- Error Management Made Exceptionally Easy

- Introduction

- When Should I Use the Exception Handling Block?

- How Do I Use the Exception Handling Block?

- What Exception Policies Do I Need?

- Diving in with a Simple Example

- Wrapping an Exception

- Replacing an Exception

- Logging an Exception

- Shielding Exceptions at WCF Service Boundaries

- Handling Specific Exception Types

- Executing Code around Exception Handling

- Assisting Administrators

- Extending Your Exception Handling

- Summary

- As Easy As Falling Off a Log

- A Cache Advance for your Applications

- Banishing Validation Complication

- Relieving Cryptography Complexity

- An Authentic Approach to Token Identity

- Appendix A: Dependency Injection with Unity

- Appendix B: Dependency Injection in Enterprise Library

- Appendix C: Policy Injection in Enterprise Library

- Appendix D: Enterprise Library Configuration Scenarios

- Appendix E: Encrypting Configuration Files

- Index

’

Microsoft® Enterprise

Library

Solutions for Enterprise Development

Alex Homer

with

Nicolas Botto

Bob Brumfield

Grigori Melnik

Erik Renaud

Fernando Simonazzi

Chris Tavares

Copyright and Terms of Use

ISBN: 9780735645233

This document is provided “as-is.” Information and views expressed in

this document, including URL and other Internet Web site references,

may change without notice. You bear the risk of using it.

Some examples depicted herein are provided for illustration only and

are fictitious. No real association or connection is intended or should

be inferred.

This document does not provide you with any legal rights to any

intellectual property in any Microsoft product. You may copy and

use this document for your internal, reference purposes.

© 2010 Microsoft. All rights reserved.

Microsoft, Windows, Windows Server, Windows Vista, Visual C#,

SQL Server, Active Directory, IntelliSense, Silverlight, MSDN, Internet

Explorer, and Visual Studio are trademarks of the Microsoft group of

companies. All other trademarks are property of their respective owners.

1 Welcome to the Library 1

Meet the Librarian 1

What You Get with Enterprise Library 1

Things You Can Do with Enterprise Library 3

Why You Should Use Enterprise Library 4

Some Fundamentals of Enterprise Library 6

Choosing Which Blocks To Install 6

Installing Enterprise Library 7

Assemblies And References 7

GAC or Bin, Signed or Unsigned? 8

Importing Namespaces 9

Configuring Enterprise Library 10

The Configuration Tools 10

Using The Configuration Tools 11

Encrypting Configuration Sections 14

Instantiating and Using Enterprise Library Objects 14

Enterprise Library Objects, Facades, and Factories 15

Creating Instances of Enterprise Library Types 15

The Simple Approach

— Using The Enterprise Library Service Locator 16

The Sophisticated Approach

— Accessing The Container Directly 16

Pros and Cons of Object Instantiation 18

More Reasons to be Sophisticated 19

Getting Objects From Previous Versions

Of Enterprise Library 21

The Example Applications 22

Summary 23

2 Much ADO about Data Access 25

Introduction 25

What Does the Data Access Application Block Do? 26

Data Operations Supported by the Data Access Block 26

How Do I Use the Data Access Block? 28

Configuring the Block and Referencing the Required Assemblies 28

Creating Database Instances 29

The Example Application 30

Reading Multiple Data Rows 31

Reading Rows Using a Query with No Parameters 31

Reading Rows Using an Array of Parameter Values 32

Reading Rows Using Queries with Named Parameters 33

Retrieving Data as Objects 35

About Accessors 35

Creating and Executing an Accessor 37

Creating and Using Mappers 38

Retrieving Xml Data 39

Retrieving Single Scalar Values 40

Retrieving Data Asynchronously 41

Preparing for Asynchronous Data Access 42

Retrieving Row Set Data Asynchronously 43

Retrieving Data as Objects Asynchronously 45

Updating Data 45

Executing an Update Query 46

Working with DataSets 47

Updating the Database from a DataSet 48

Managing Connections 52

Working with Connection-Based Transactions 53

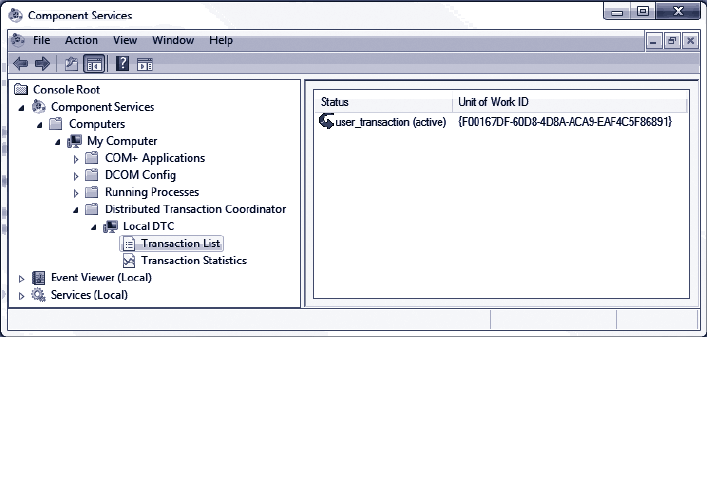

Working with Distributed Transactions 55

Extending the Block to Use Other Databases 58

Summary 58

3 Error Management Made Exceptionally Easy 61

Introduction 61

When Should I Use the Exception Handling Block? 62

How Do I Use the Exception Handling Block? 62

What Exception Policies Do I Need? 63

Allowing Exceptions to Propagate 63

About Exception Handling Policies 63

Choosing an Exception Handling Strategy 65

Process or Handle Exception? 66

Using the Process Method 67

Diving in with a Simple Example 68

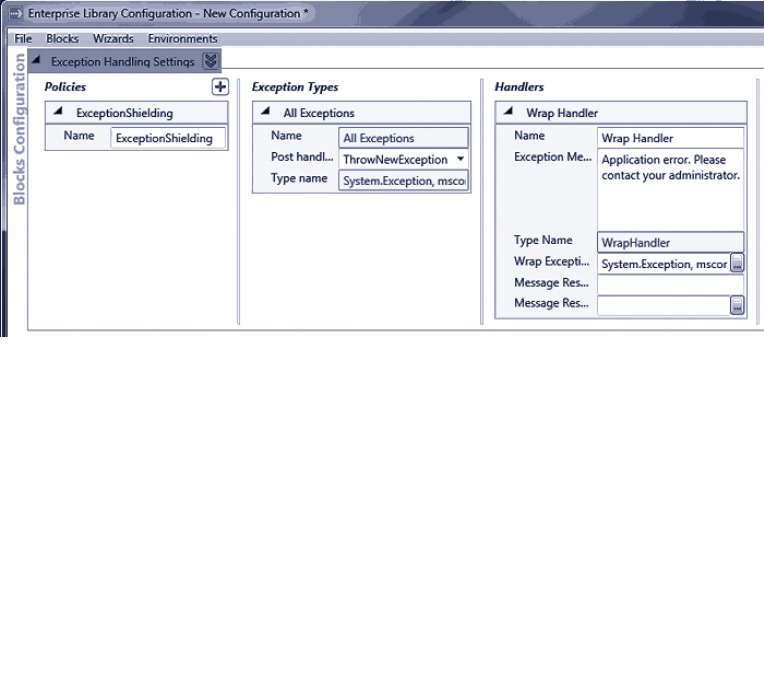

Applying Exception Shielding 69

Wrapping an Exception 70

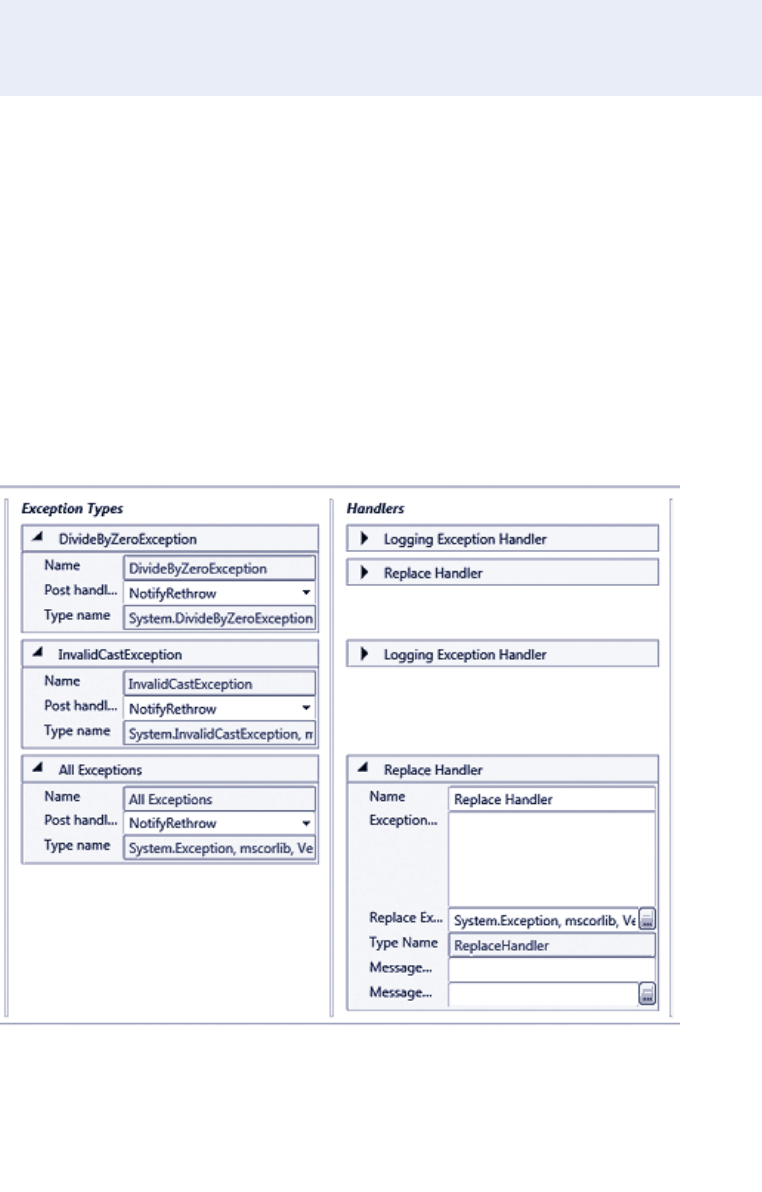

Configuring the Wrap Handler Policy 70

Initializing the Exception Handling Block 71

Editing the Application Code to Use the New Policy 71

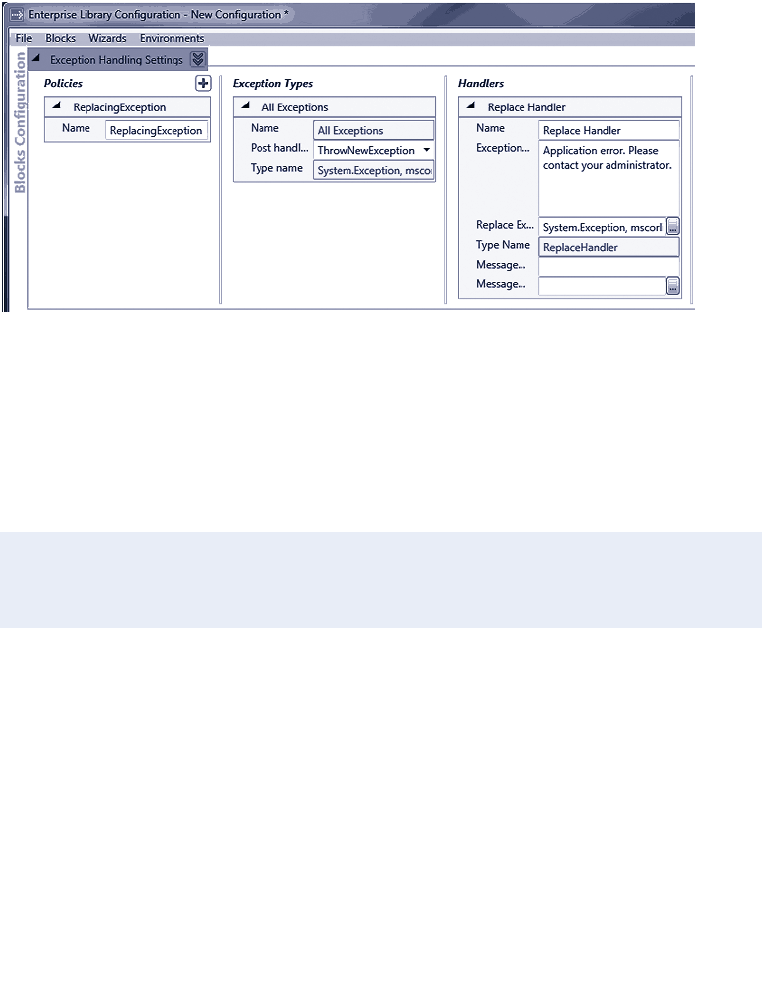

Replacing an Exception 74

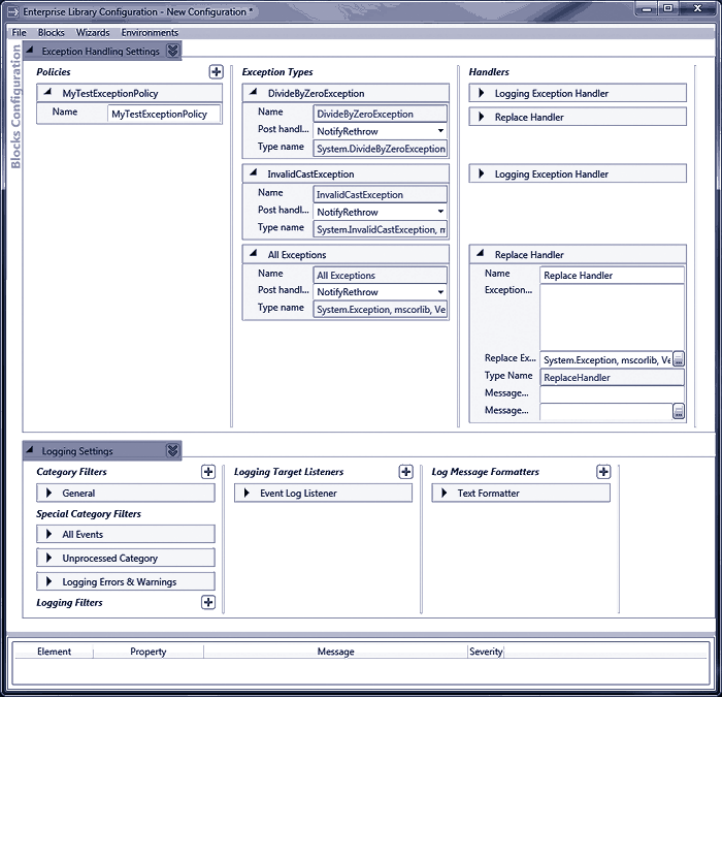

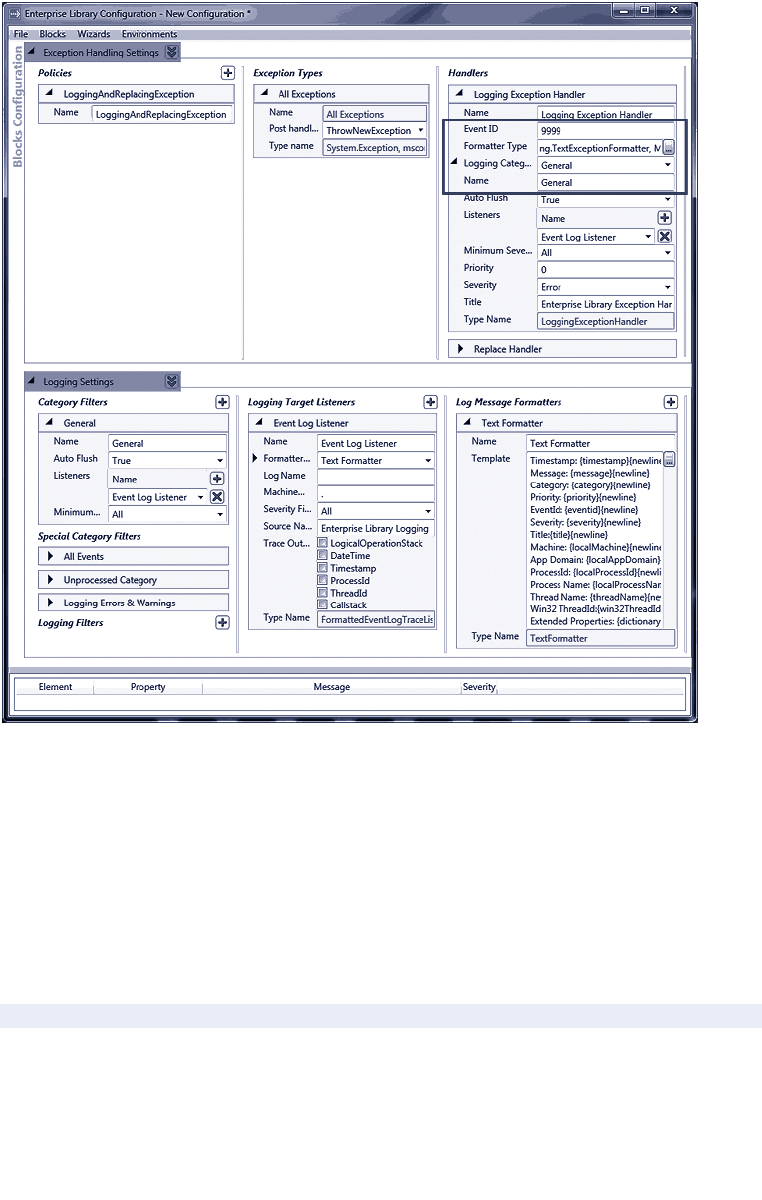

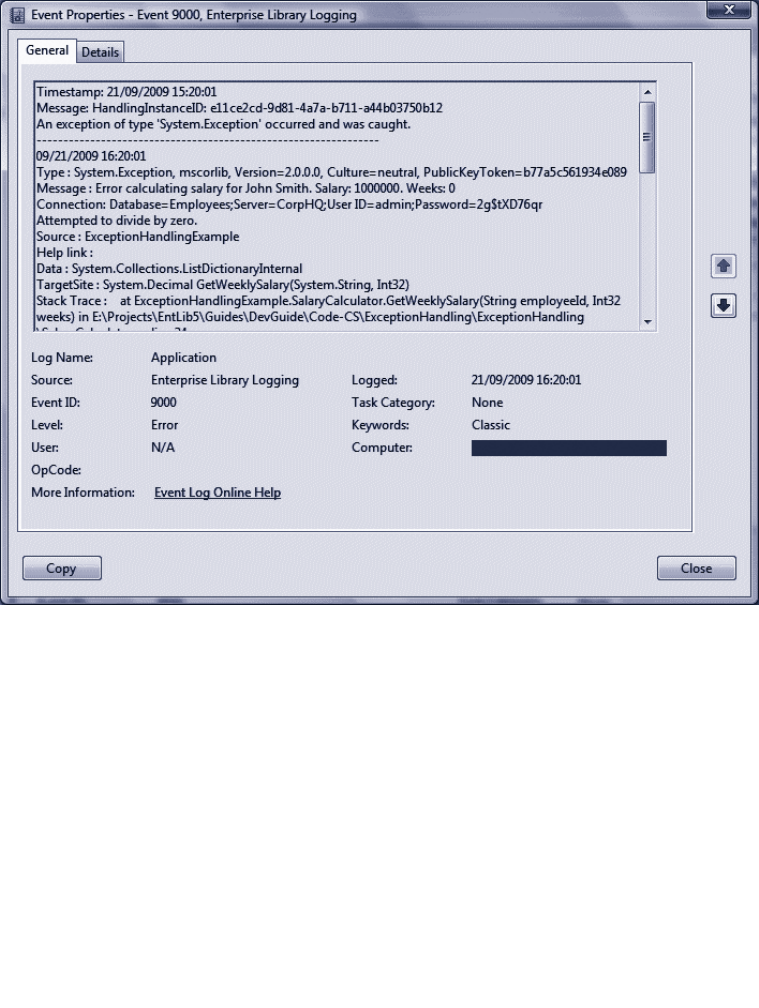

Logging an Exception 75

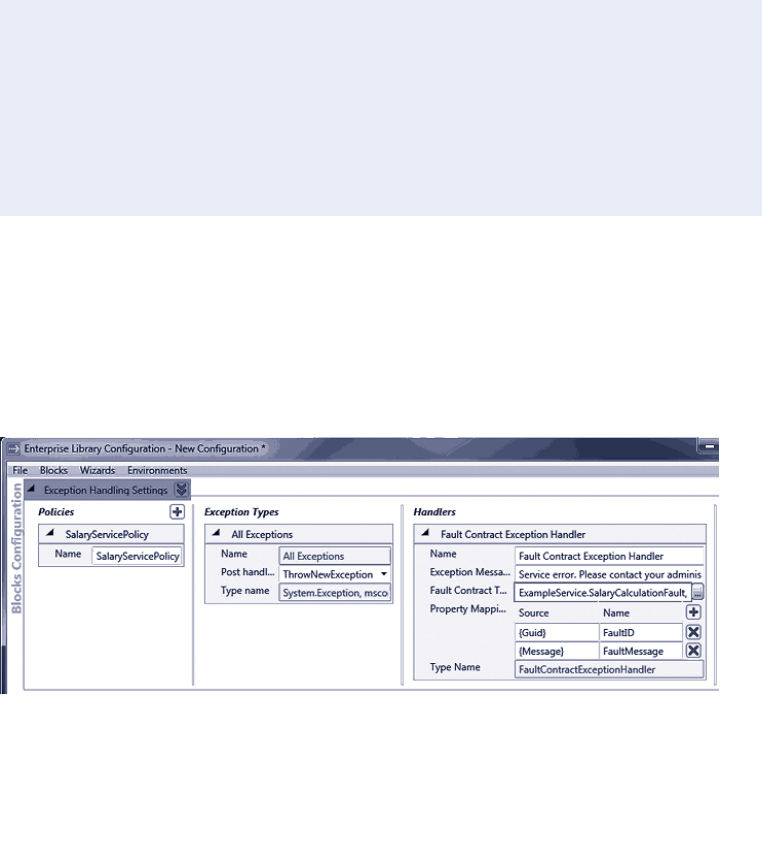

Shielding Exceptions at WCF Service Boundaries 78

Creating a Fault Contract 78

Configuring the Exception Handling Policy 78

Editing the Service Code to Use the New Policy 79

The Fault Contract Exception Handler 80

Handling Specific Exception Types 81

Executing Code around Exception Handling 82

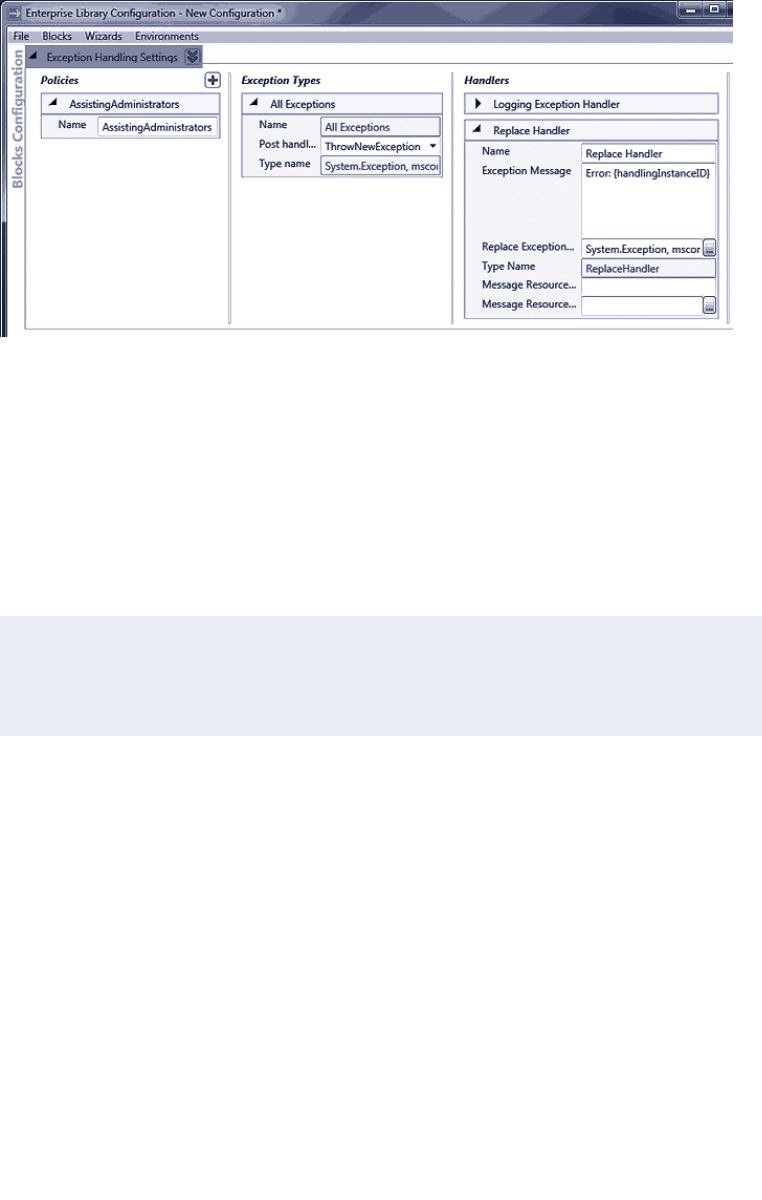

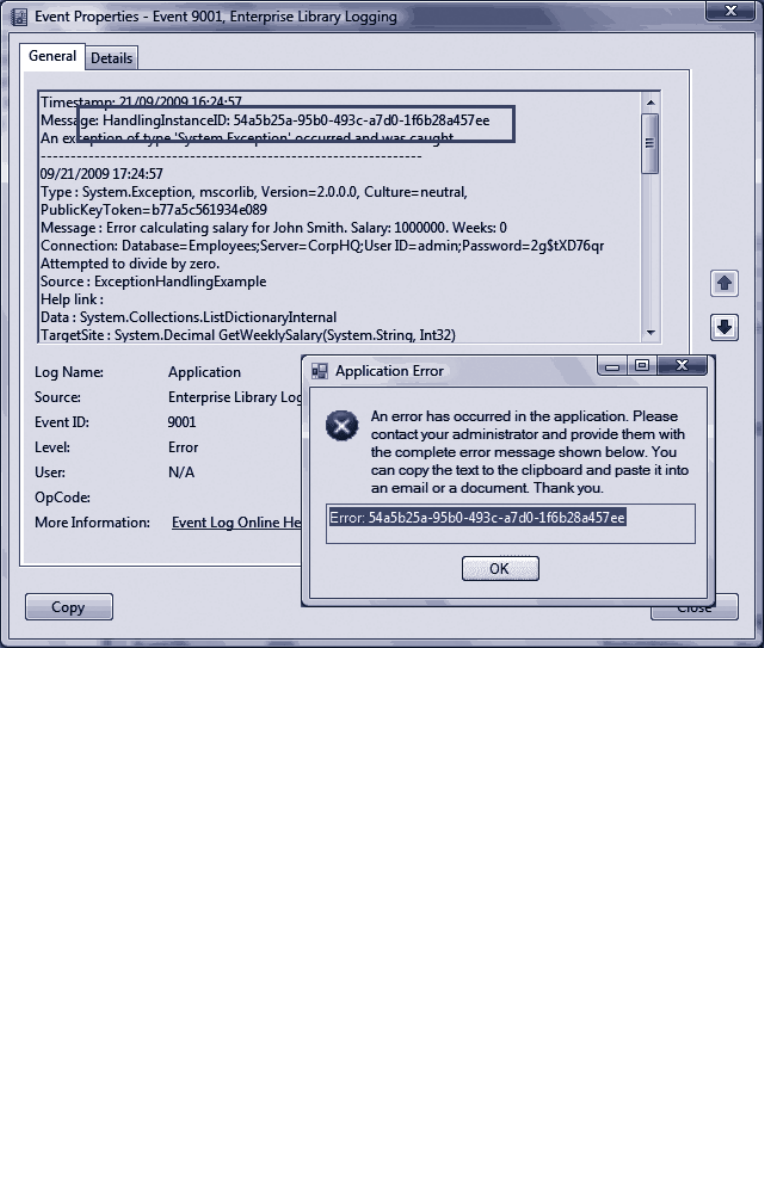

Assisting Administrators 84

Extending Your Exception Handling 87

Summary 87

4 As Easy as Falling Off a Log 89

Introduction 89

What Does the Logging Block Do? 90

Logging Categories 92

Logging Overhead and Additional Context Information 93

How Do I Use the Logging Block? 93

Configuring the Logging Block 93

Initializing the Logging Block 94

Diving In with an Example 95

Creating and Writing Log Entries with a Logwriter 95

About Logging Categories 98

Filtering by Category 100

Writing Log Entries to Multiple Categories 100

Controlling Output Formatting 101

Non-Formatted Trace Listeners 102

Filtering by Severity in a Trace Listener 103

Filtering All Log Entries by Priority 103

Creating and Using Logentry Objects 104

Capturing Unprocessed Events and Logging Errors 105

About Special Sources 105

An Example of Using Special Sources 106

Logging to a Database 108

Using the Database Trace Listener 109

Testing Logging Filter Status 110

Obtaining Information about Trace Sources

and Trace Listeners 111

Checking if Filters Will Block a Log Entry 112

Adding Additional Context Information 114

Tracing and Correlating Activities 115

An Example of Tracing Activities 116

Creating Custom Trace Listeners, Filters, and Formatters 119

Summary 119

5 A Cache Advance for Your Applications 121

Introduction 121

What Does the Caching Block Do? 123

Flushed or Expired? 123

Which Expiration Policy? 124

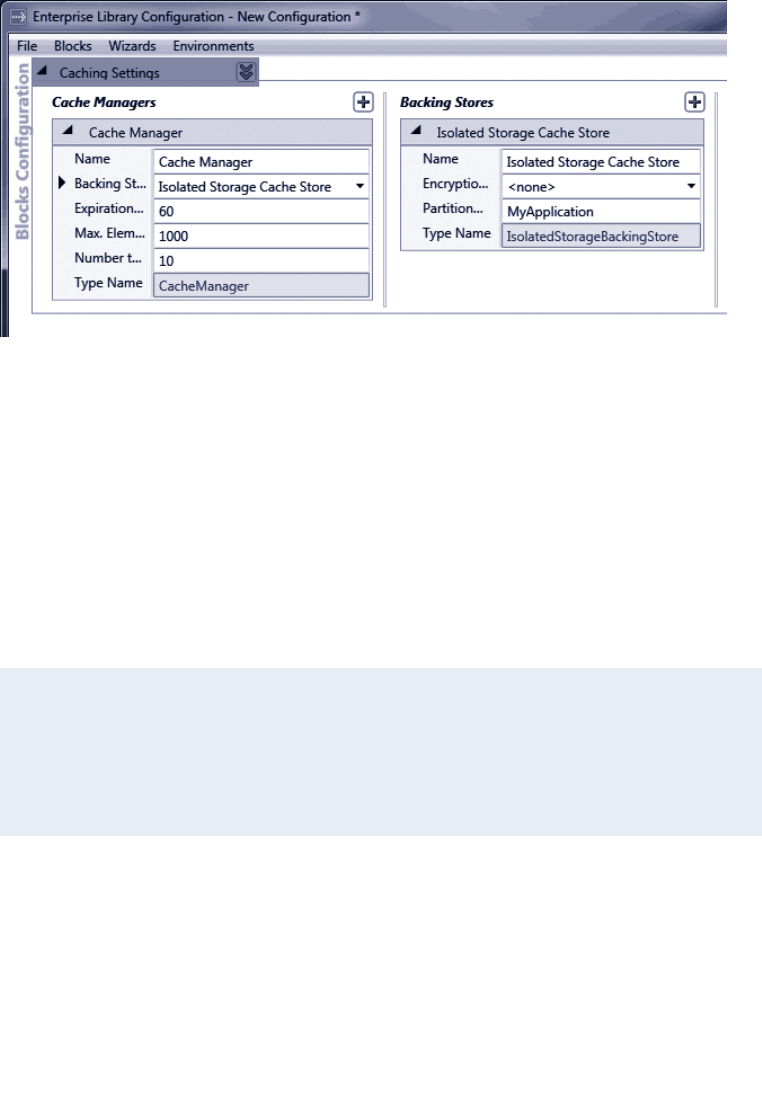

How Do I Configure the Caching Block? 124

Persistent Caching 125

Encrypting Cached Items 126

Initializing the Caching Block 126

How Do I Use the Caching Block? 127

About the Example Application 127

Adding Items to and Retrieving Items from the Cache 127

What’s In My Cache? 130

Using the Isolated Storage Backing Store 131

Encrypting the Cached Data 133

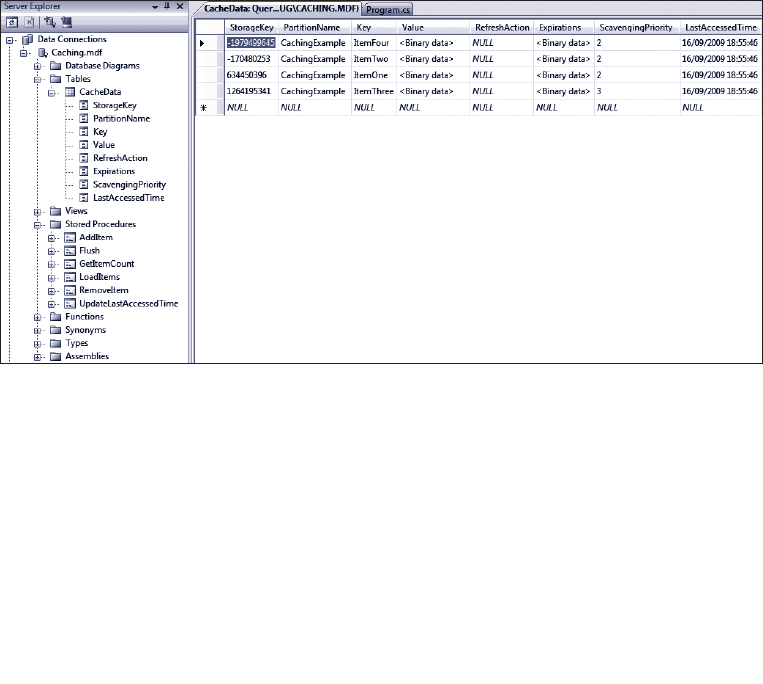

Using the Database Backing Store 134

Removing Items From and Flushing the Cache 135

Using a File Dependency and Extended Time Expiration 136

Adding the Items to the Cache 137

Refreshing the Cache 139

Loading the Cache 141

Proactive Cache Loading 141

Reactive Cache Loading 142

Extending Your Cache Advance 143

Summary 144

6 Banishing Validation Complication 145

Introduction 145

Techniques for Validation 146

Where Should I Validate? 146

What Should I Validate? 146

How Should I Validate? 147

What Does the Validation Block Do? 147

The Range of Validators 149

Validating with Attributes 151

DataAnnotations Attributes 151

Self-Validation 152

Validation Rule Sets 154

Assigning Validation Rules to Rule Sets 154

Configuring Validation Block Rule Sets 154

Specifying Rule Sets When Validating 155

How Do I Use The Validation Block? 156

Preparing Your Application 156

Choosing a Validation Approach 157

Options for Creating Validators Programmatically 158

Performing Validation and Displaying Validation Errors 159

Understanding Message Template Tokens 160

Diving in With Some Simple Examples 161

Validating Objects and Collections of Objects 162

Creating a Type Validator using the ValidatorFactory 162

Delving Deeper into ValidationResults 163

Using the Object Validator 164

Differences Between the Object Validator

and the Factory-Created Type Validators 165

Validating Collections of Objects 165

Using Validation Attributes 166

Using the Validation Block Attributes 166

Using Data Annotation Attributes 169

Defining Attributes in Metadata Classes 171

Specifying the Location of Validation Rules 172

Creating and Using Individual Validators 173

Validating Strings for Contained Characters 173

Validating Integers within a Domain 173

Validating with a Composite Validator 174

Validating Single Members of an Object 175

WCF Service Validation Integration 176

Defining Validation in the Service Contract 176

Editing the Service Configuration 177

Using the Product Service and Detecting Validation Errors 178

User Interface Validation Integration 180

ASP.NET User Interface Validation 180

Windows Forms User Interface Validation 181

WPF User Interface Validation 181

Creating Custom Validators 182

Summary 182

7 Relieving Cryptography Complexity 183

Introduction 183

What Does the Cryptography Block Do? 183

A Secret Shared 184

Making a Hash of It 184

How Does the Cryptography Block Manage

Encryption Keys? 184

How Does the Cryptography Block Integrate

With Other Blocks? 185

How Do I Use the Cryptography Block? 185

Configuring Cryptographic Providers 186

Adding the Required References 187

Diving in with an Example 187

Encrypting and Decrypting Data Using

A Symmetric Provider 188

Encrypting and Decrypting a Text String 188

Encrypting and Decrypting an Object Instance 189

Obtaining and Comparing Hash Values 191

Creating and Comparing Hash Values for Text Strings 191

Creating and Comparing Hash Values for Object Instances 193

Creating Custom Cryptography Providers 195

Summary 196

8 An Authentic Approach to Token Identity 197

Introduction 197

What Does the Security Block Do? 198

What are Authorization Rule Providers? 198

About Authorization Manager (AzMan) 198

Why Do I Need a Security Cache? 199

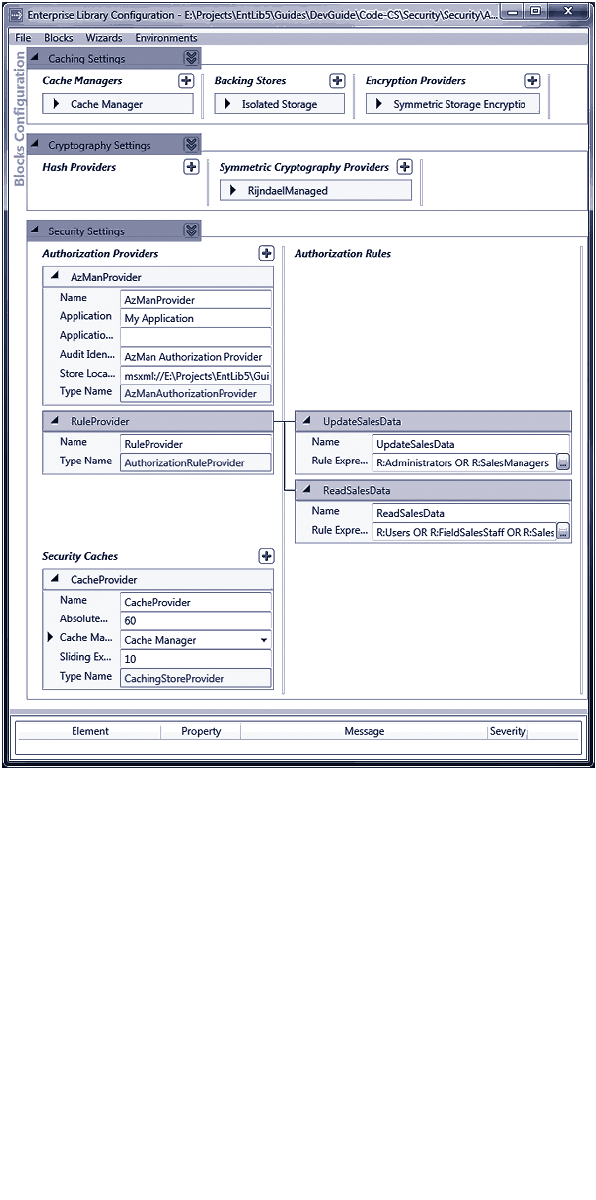

How Do I Configure the Security Block? 200

Configuring Authorization Rules 202

How Do I Use the Security Block? 202

Diving in With an Example 203

Caching a User Identity and Obtaining

A Temporary Token 204

Displaying User Identity Details 205

Displaying Generic Principal Details 205

Authenticating a User Using a Token 206

Terminating a User Session and Expiring the Token 207

Checking If a User is Authorized to Perform a Task 208

Using Security Block Configured Rules 208

Using AzMan Provider Rules 210

Creating Custom Authorization Providers 212

Summary 212

213

What is Dependency Injection? 213

The Unity Dependency Injection and Interception Mechanism 214

Summary of Unity Features 215

Defining Dependencies with Unity 216

Constructor Injection 217

Automatic Constructor Injection 217

Design-Time Configuration 218

Run-Time Configuration 219

Configuration with Attributes 219

Property (Setter) Injection 220

Design-Time Configuration 220

Run-Time Configuration 221

Configuration with Attributes 221

Method Call Injection 222

Design-Time Configuration 222

Run-Time Configuration 223

Configuration with Attributes 223

More Dependency Injection Opportunities 224

Resolving Populated Instances of Your Classes 224

225

Loading Configuration Information into a Unity Container 225

Viewing Registrations in the Container 226

Populating Entire Object Graphs at Application Startup 227

Maintaining a Container Reference in Request-Based

Applications 228

Using an Alternative Service Locator or Container 229

231

233

About Enterprise Library Configuration 233

External Configuration 234

Programmatic Support 234

Using the Fluent Interfaces 234

Scenarios for Advanced Configuration 235

: Using The Default Application

Configuration File 235

: Using A Non-Default Configuration Store 235

: Sharing The Same Configuration

Between Multiple Applications 236

: Managing And Enforcing Configuration

For Multiple Applications 236

: Sharing Configuration Sections

Across Multiple Applications 237

: Applying a Common Configuration

Structure for Applications 238

Deployment Environments 239

241

1

Meet the Librarian

Before we begin our exploration of Microsoft® Enterprise Library and the wondrous

range of capabilities and opportunities it encompasses, you need to meet the Librarian.

Sometimes we call him Tom, sometimes we call him Chris, and sometimes we call him

Grigori. But, despite this somewhat unnerving name variability, he—in collaboration with

an advisory board of experts from the industry and other internal Microsoft product

groups, and a considerable number of other community contributors—is the guardian and

protector of the Microsoft Enterprise Library.

Since its inception as a disparate collection of individual application blocks, the Li-

brarian has guided, prodded, inspired, and encouraged his team to transform it into a

comprehensive, powerful, easy-to-use, and proven library of code that can help to mini-

mize design and maintenance pain, maximize development productivity, and reduce costs.

And now in version 5.0, it contains even more built-in goodness that should make your

job easier. It’s even possible that, with the time and effort you will save, Enterprise Library

can reduce your golf handicap, help you master the ski slopes, let you spend more time

with your kids, or just make you a better person. However, note that the author, the

publisher, and their employees cannot be held responsible if you just end up watching

more TV or discovering you actually have a life.

What You Get with Enterprise Library

Enterprise Library is made up of a series of application blocks, each aimed at managing

specific crosscutting concerns. In case this concept is unfamiliar, crosscutting concerns

are those annoying tasks that you need to accomplish in several places in your application.

When trying to manage crosscutting concerns there is often the risk that you will imple-

ment slightly different solutions for each task at each location in your application, or that

you will just forget them altogether. Writing entries to a system log file or Windows®

Event Log, caching data, and validating user input are typical crosscutting concerns. While

there are several approaches to managing them, the Enterprise Library application blocks

make it a whole lot easier by providing generic and configurable functionality that you

can centralize and manage.

Welcome to the Library 1

2 ch a p ter one

What are application blocks? The definition we use is “pluggable and reusable

software components designed to assist developers with common enterprise development

challenges.” Application blocks help address the kinds of problems developers commonly

face from one line-of-business project to the next. Their design encapsulates the

Microsoft recommended practices for Microsoft .NET Framework-based applications,

and developers can add them to .NET-based applications and configure them quickly

and easily.

As well as the application blocks, Enterprise Library contains configuration tools, plus

a set of core functions that manage tasks applicable to all of the blocks. Some of

these functions—routines for handling configuration and serialization, for example—are

exposed and available for you to use in your own applications.

And, on the grounds that you need to learn how to use any new tool that is more

complicated than a hammer or screwdriver, Enterprise Library includes a range of sample

applications, descriptions of key scenarios for each block, hands-on labs, and comprehen-

sive reference documentation. You even get all of the source code and the unit tests that

the team created when building each block (the team follows a test-driven design

approach by writing tests before writing code). So you can understand how it works, see

how the team followed good practices to create it, and then modify it if you want it to

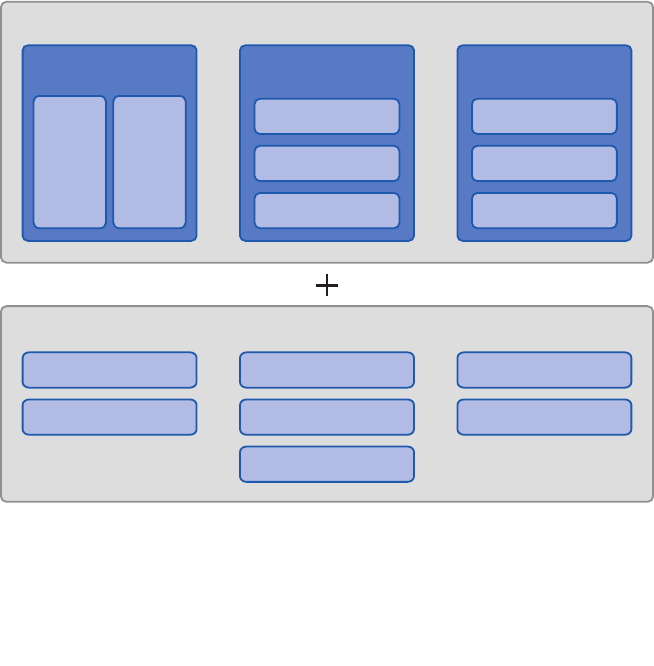

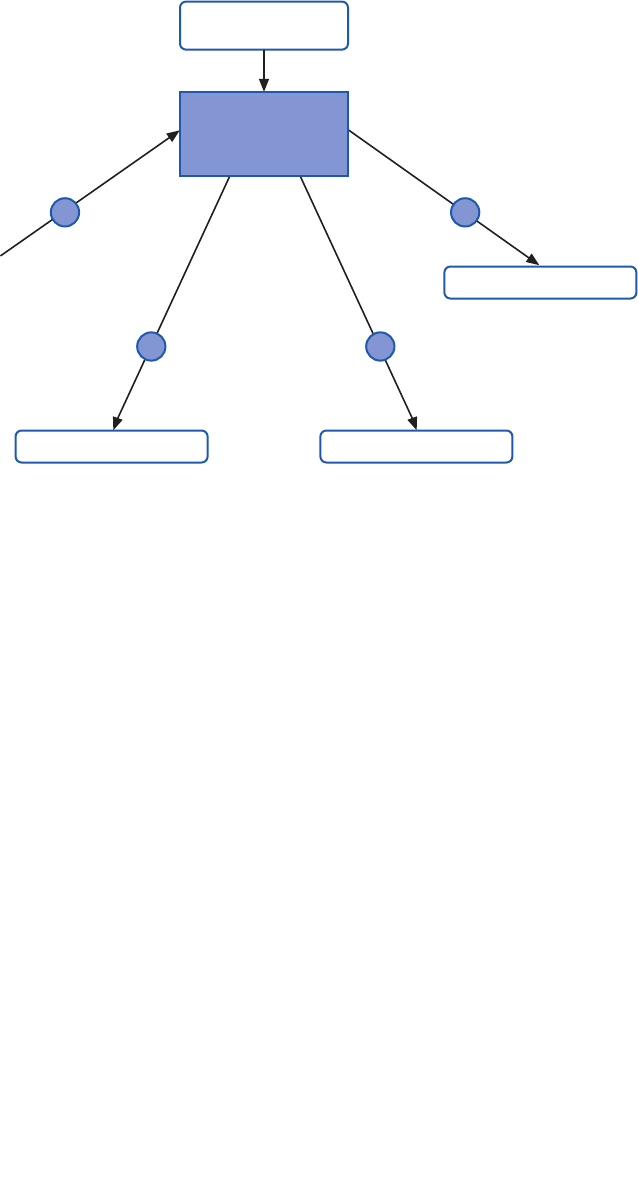

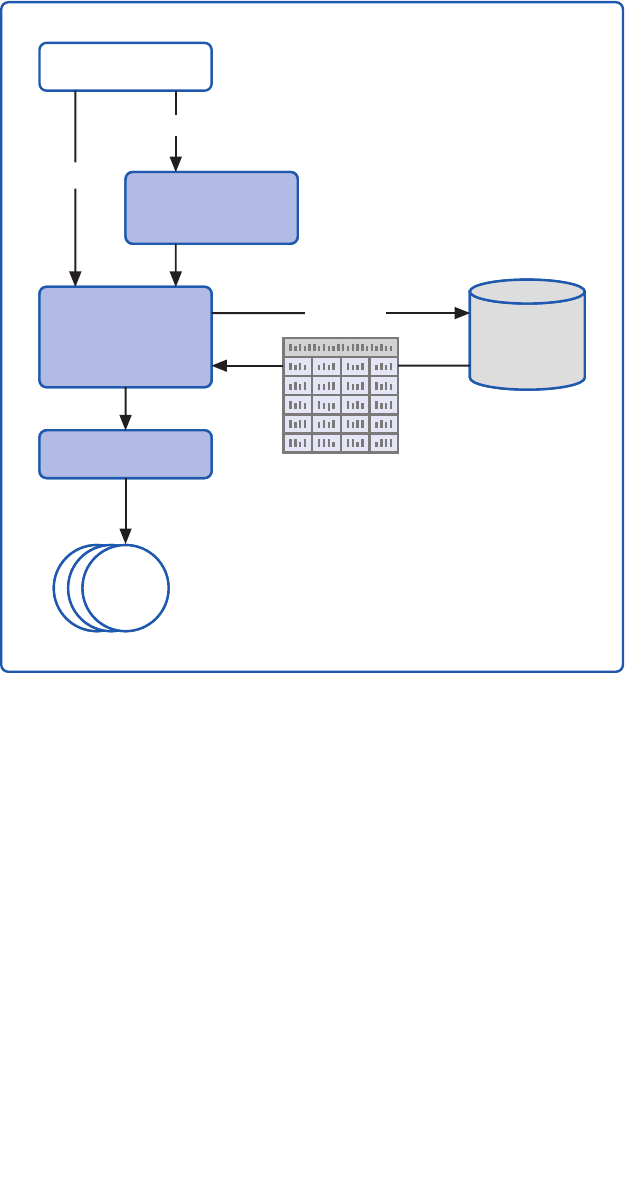

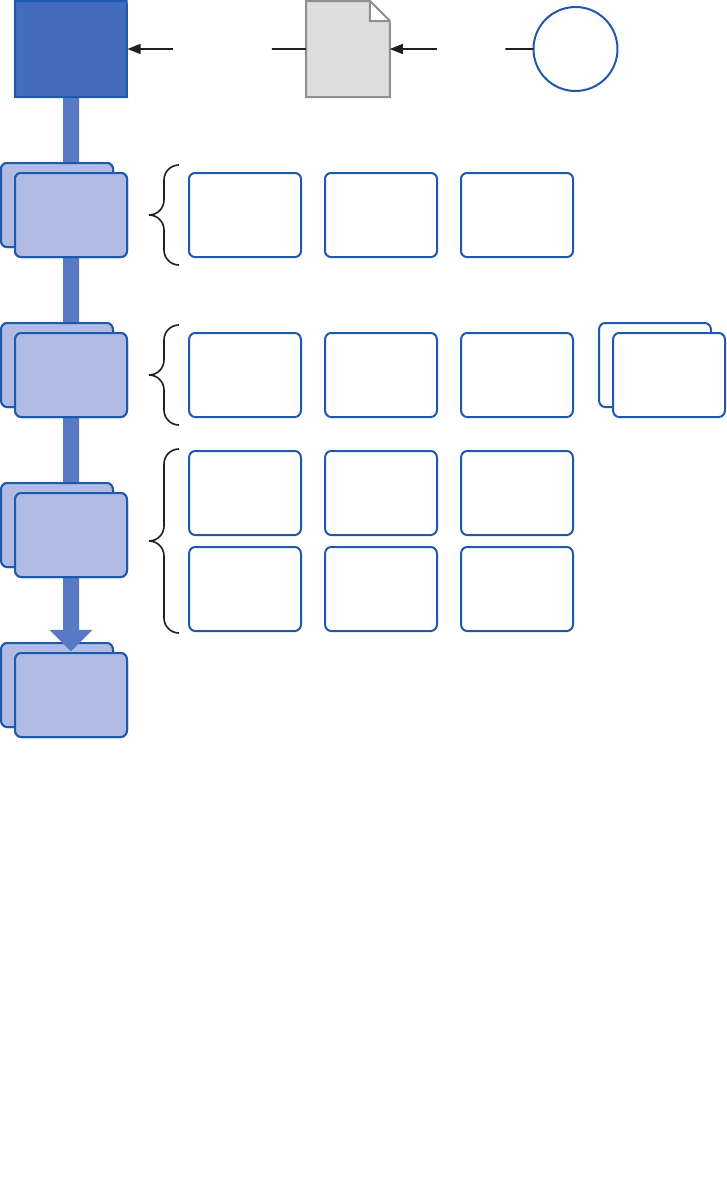

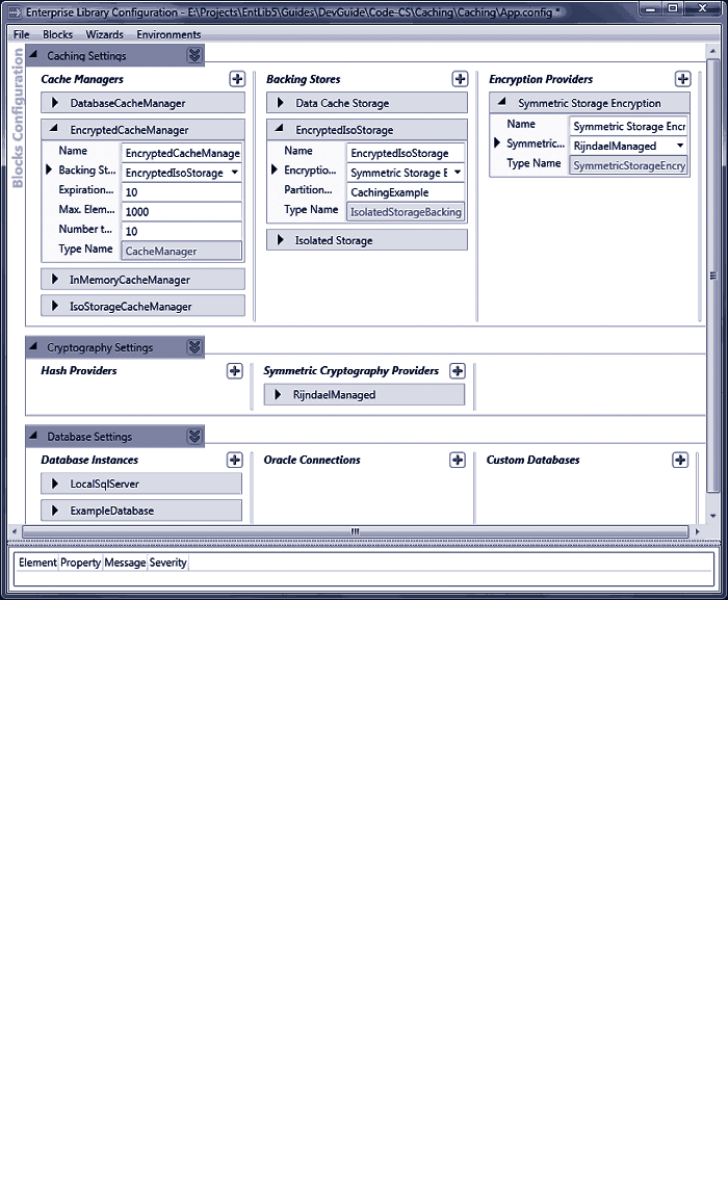

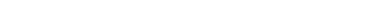

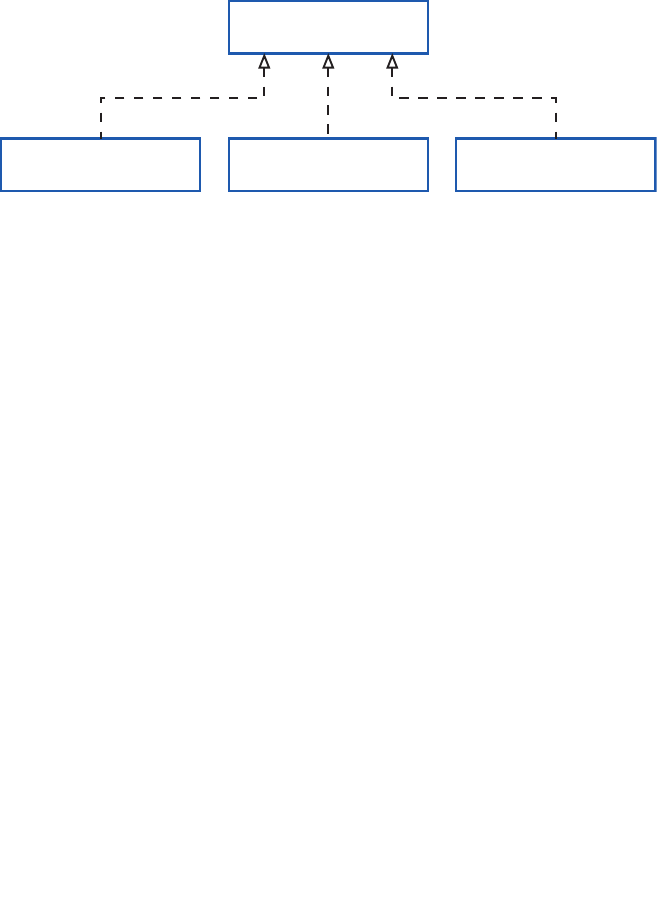

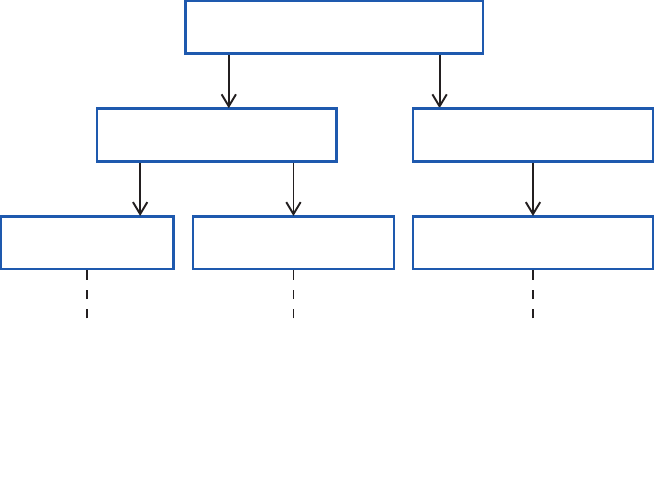

do something different. Figure 1 shows the big picture for Enterprise Library.

Enterprise Library—the big picture

Enterprise Library Big Picture

In the Box

Run time Design time Guidance

Functional

Blocks

Wiring

Blocks

Configuration Console

Configuration Schema

Visual Studio

Integration

Conceptual

Documentation

API Reference

Documentation

Source Code (with tests)

Developer’s Guide

Migration Guide

Hands on Labs

CodePlex Support Forum

EntLibContrib

Community Extensions

Videos & Demos

FAQs

Ancillary

3

Things You Can Do with Enterprise Library

If you look at the installed documentation, you’ll see that Enterprise Library today actu-

ally contains nine application blocks. However, there are actually only seven blocks that

“do stuff”—these are referred to as functional blocks. The other two are concerned with

“wiring up stuff” (the wiring blocks). What this really means is that there are seven blocks

that target specific crosscutting concerns such as caching, logging, data access, and valida-

tion. The other two, the Unity Dependency Injection mechanism and the Policy Injection

Application Block, are designed to help you implement more loosely coupled, testable,

and maintainable systems. There’s also some shared core pieces used in all the blocks. This

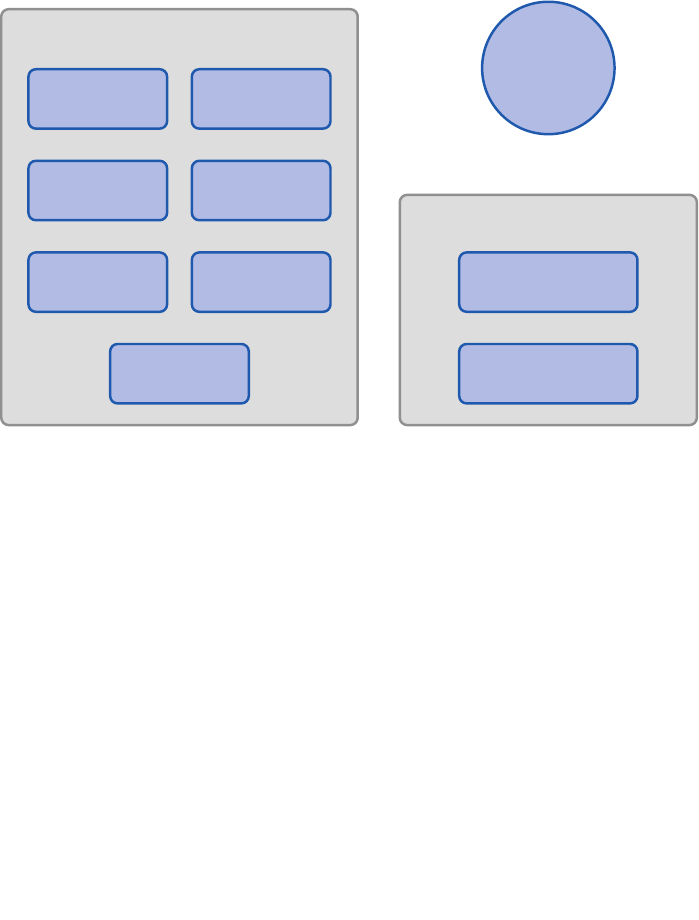

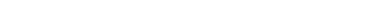

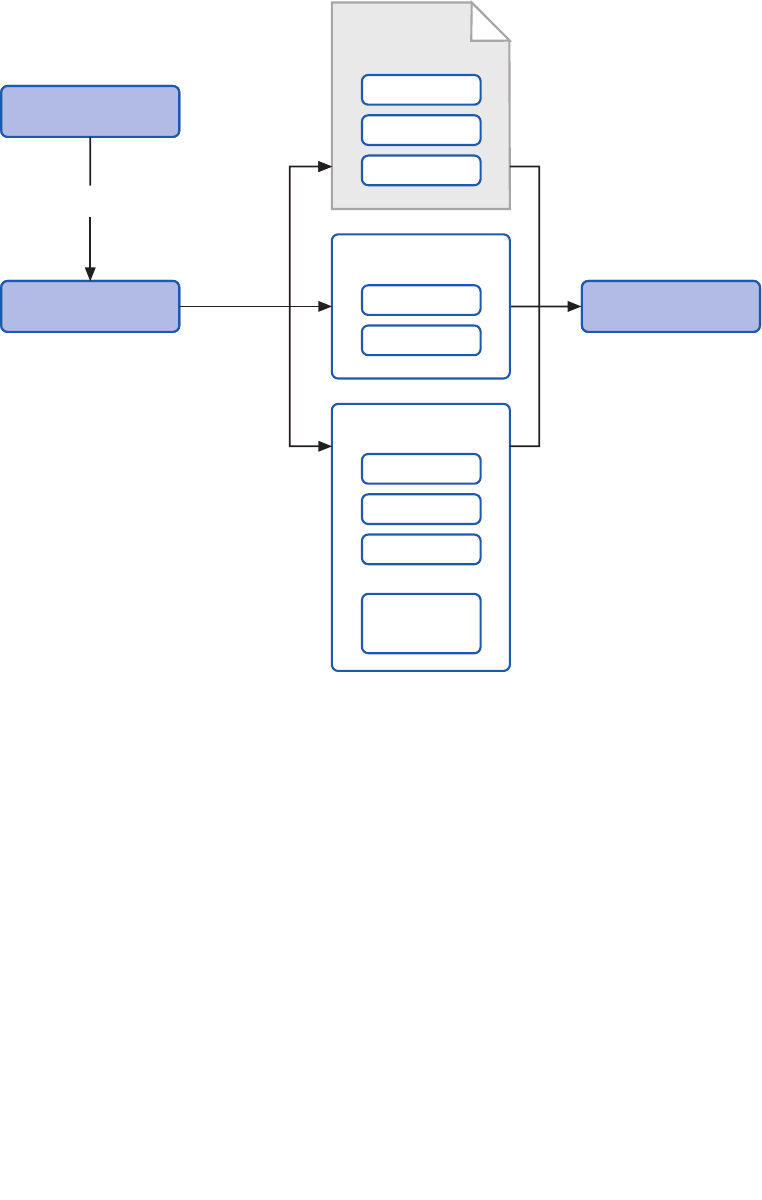

is shown in Figure 2.

The parts of Enterprise Library

In this book we’ll be concentrating on the seven functional blocks. If you want to know

more about how you can use Unity and the Policy Injection Application Block, check out

the appendices for this guide. They describe the capabilities of Unity as a dependency

injection mechanism and the use of policy injection in more detail.

The following list describes the crosscutting scenarios you’ll learn about in this book:

• Caching. The Caching Application Block lets you incorporate a local cache in

your applications that uses an in-memory cache and, optionally, a database or

isolated storage backing store. The block provides all the functionality needed

to retrieve, add, and remove cached data, and supports configurable expiration

and scavenging policies. You can also extend it by creating your own pluggable

Data Access

Caching

Functional Application Blocks

Cryptography

Logging

Exception

Handling

Security

Validation Policy Injection/

Interception

Unity

Core

Wiring Application Blocks

4 ch a p ter one

providers or by using third-party providers—for example, to support distrib-

uted caching and other features. Caching can provide considerable improve-

ments in performance and efficiency in many application scenarios.

• Credential Management. The Security Application Block lets you easily

implement common authorization-related functionality, such as caching the

user’s authorization and authentication data and integrating with the Microsoft

.NET Framework security features.

• Data Access. The Data Access Application Block simplifies many common data

access tasks such as reading data for display, passing data through application

layers, and submitting changed data back to the database system. It includes

support for both stored procedures and in-line SQL, can expose the data as a

sequence of objects for client-side querying, and provides access to the most

frequently used features of ADO.NET in simple-to-use classes.

• Encryption. The Cryptography Application Block makes it easy to incorporate

cryptographic functionality such as encrypting and decrypting data, creating a

hash from data, and comparing hash values to verify that data has not been

altered. Using this block can help you avoid common pitfalls when developing

custom mechanisms that might introduce security vulnerabilities.

• Exception Handling. The Exception Handling Application Block lets you

quickly and easily design and implement a consistent strategy for managing

exceptions that occur in various architectural layers of your application. It can

log exception information, hide sensitive information by replacing the original

exception with another exception, and maintain contextual information for an

exception by wrapping the original exception inside another exception.

• Logging. The Logging Application Block simplifies the implementation of

common logging functions such as writing information to the Windows Event

Log, an e-mail message, a database, Windows Message Queuing, a text file, a

Windows Management Instrumentation (WMI) event, or a custom location.

• Validation. The Validation Application Block provides a range of features for

implementing structured and easy-to-maintain validation mechanisms using

attributes and rule sets, and integrating with most types of application inter-

face technologies.

Why You Should Use Enterprise Library

As you can see from the previous section, Enterprise Library provides a comprehensive

set of features that can help you to manage your crosscutting concerns though a reusable

set of components and core functionality. Of course, like many developers, you may suf-

fer from the well-known NIH (not invented here) syndrome. But, seriously, isn’t it about

time that every developer on your team stopped writing his or her own logging frame-

work? It’s a commonly accepted fact that the use of standard and proven code libraries

and components can save development time, minimize costs, reduce the use of precious

test resources, and decrease the overall maintenance effort. In the words of the Librarian,

“These days you cannot afford not to reuse.”

5welcome to the libr a ry

You can download the Nucleus Research 2009 Report on Microsoft patterns &

practices, which reviews the key components, benefits, and includes direct feedback

from software architects and developers who have adopted patterns & practices

deliverables in their projects and products from http://msdn.microsoft.com/en-us/

practices/ee406167.aspx.

And it’s not as though Enterprise Library is some new kid on the block that might

morph into something completely different next month. Enterprise Library as a concept

has been around for many years, and has passed through five full releases of the library as

well as intermediate incremental releases.

Enterprise Library continues to evolve along with the capabilities of the .NET Frame-

work. As the .NET Framework has changed over time, some features that were part of

Enterprise Library were subsumed into the core, while Enterprise Library changed to take

advantage of the new features available in both the .NET Framework and the underlying

system. Examples include new programming language capabilities and improved perfor-

mance and capabilities in the .NET configuration and I/O mechanisms. Yet, even in version

5.0, the vast majority of the code is entirely backwards compatible with applications

written to use Enterprise Library 2.0.

You can also use Enterprise Library as learning material—not only to implement de-

sign patterns in your application, but also to learn how the development team applies

patterns when writing code. Enterprise Library embodies many design patterns, and dem-

onstrates good architectural and coding techniques. The source code for the entire library

is provided, so you can explore the implementations and reuse the techniques in your own

applications.

And, finally, it is free! Or rather, it is distributed under the Microsoft Public License

(MSPL) that grants you a royalty-free license to build derivative works, and distribute

them free—or even sell them. You must retain the attribution headers in the source files,

but you can modify the code and include your own custom extensions. Do you really need

any other reasons to try Enterprise Library?

You’ll notice that, even though we didn’t print “Don’t Panic!” in large friendly letters

on the cover, this book does take a little time to settle down into a more typical style

of documentation, and start providing practical examples. However, you can be sure

that—from here on in—you’ll find a whole range of guidance and examples that will

help you master Enterprise Library quickly and easily. There are other resources to help

if you’re getting started with Enterprise Library (such as hands-on-labs), and there’s

help for existing users as well (such as the breaking changes and migration information

for previous versions) available at http://www.codeplex.com/entlib/. You can also visit

the source code section of the site to see what the Enterprise Library team is working

on as you read this guide.

6 ch a p ter one

Some Fundamentals of Enterprise Library

Before we dive into our tour of the application blocks and features of Enterprise Library,

you need to grasp some fundamentals. In this chapter, the Librarian will help you explore

topics such as how to install and deploy the library, and how to perform initial configura-

tion. After that, you’ll be free to skip to any of the other chapters and learn more about

the ways that each block helps you to simplify your code and manage your crosscutting

concerns. For more information about the topics covered in this chapter, see the product

documentation installed with Enterprise Library, or the online documentation available at

http://go.microsoft.com/fwlink/?LinkId=188874.

Enterprise Library is a “pick and mix” candy store, where you choose just the features you

want to use and simply disregard the rest. Of course, before you can choose your favorite

candies from the tempting displays in the candy store, you need to find a paper bag to

hold them. You can think of this as a prerequisite for picking and mixing, and a basic

feature that you will use every time—irrespective of whether you choose gummy bears,

chocolate-covered hazelnuts, or mint imperials.

Likewise, with Enterprise Library, there are prerequisites and basic features. The main

prerequisite before you start development is to install the binaries and support files onto

your machine. The basic features that you need every time you use Enterprise Library are

the core assemblies that implement access to configuration, object creation, and ancillary

features used by all of the blocks.

However, when you install Enterprise Library, you can choose which of the applica-

tion blocks you want to install; though it is generally a good idea to install them all unless

you are sure you will not use specific blocks. Some blocks have dependencies on other

blocks, and installing all of them while developing your applications will simplify configu-

ration and ensure that you do not have to re-run the installer to add other blocks later on.

When you come to deploy your application, you only need to deploy the blocks you are

using and their dependent blocks.

For example, the Exception Handling block depends on the Logging block for logging

exception information. Table 1 shows the full list of these dependencies.

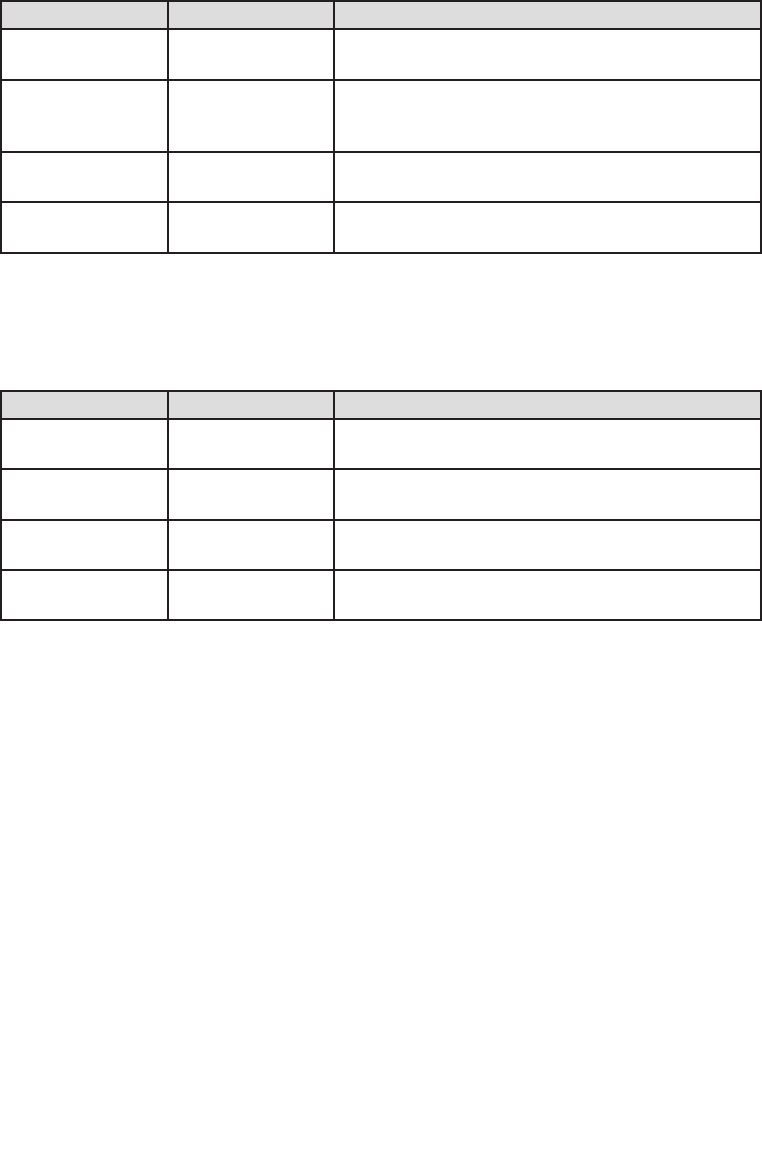

Application block optional dependencies

Application Block Optional dependencies

Caching Block May use the Data Access block to cache data in a database.

May use the Cryptography block to encrypt cached data.

Exception Handling Block May use the Logging block to log exception information.

May use the Data Access block to log exception information to

a database.

Logging Block May use the Data Access block to log to a database.

Security Block May use the Caching block to cache credentials.

May use the Data Access block to cache credentials in a database.

May use the Cryptography block to encrypt cached credentials.

7welcome to the libr a ry

The configuration tools will automatically add the required block to your application

configuration file with the default configuration when required. For example, when you

add a Logging handler to an Exception Handling block policy, the configuration tool will

add the Logging block to the configuration with the default settings.

The seven application blocks we cover in this guide are the functional blocks that are

specifically designed to help you manage a range of crosscutting concerns. All of these

blocks depend on the core features of Enterprise Library, which in turn depend on the

Unity dependency injection and interception mechanism (the Unity Application Block) to

perform object creation and additional basic functions.

To begin using Enterprise Library you must first install it. You can download the current

version from http://msdn.microsoft.com/entlib/. Simply run the Microsoft Installer (MSI)

package to begin the installation, and select the blocks and features you want to install.

This installs the precompiled binaries ready for you to use, along with the accompanying

tools and resources such as the configuration editor and scripts to install the samples and

instrumentation.

If you want to examine the source code, and perhaps even modify it to suit your own

requirements, be sure to select the option to install the source code when you run the

installer. The source code is included within the main installer as a separate package,

which allows you to make as many working copies of the source as you want and go back

to the original version easily if required. If you choose to install the source, then it’s also

a good idea to select the option to have the installer compile the library for you so that

you are ready to start using it straight away. However, if you are happy to use the precom-

piled assemblies, you do not need to install or compile the source code.

After the installation is complete, you will see a Start menu entry containing links to

the Enterprise Library tools, source code installer, and documentation. The tools include

batch files that install instrumentation, database files, and other features. There are also

batch files that you can use to compile the entire library source code, and to copy all the

assemblies to the bin folder within the source code folders, if you want to rebuild the li-

brary from the source code.

It’s not uncommon, when people first look at Enterprise Library, to see a look of mild

alarm spread across their faces. Yes, there are quite a few assemblies, but remember:

• You only need to use those directly connected with your own scenario.

• Several are required for only very special situations.

• The runtime assemblies you will use in your applications are mostly less than

100 KB in size; and the largest of all is only around 500 KB.

• In most applications, the total size of all the assemblies you will use will be

between 1 and 2 MB.

8 ch a p ter one

The assemblies you should add to any application that uses Enterprise Library are the

common (core) assembly, the Unity dependency injection mechanism (if you are using the

default Unity container), and the container service location assembly:

• Microsoft.Practices.EnterpriseLibrary.Common.dll

• Microsoft.Practices.Unity.dll

• Microsoft.Practices.Unity.Interception.dll

• Microsoft.Practices.ServiceLocation.dll

You will also need the assembly Microsoft.Practices.Unity.Configuration.dll

if you wish to reference specific Unity configuration classes in your code. However,

in the majority of cases, you will not require this assembly.

In addition to the required assemblies, you must reference the assemblies that implement

the Enterprise Library features you will use in your application. There are several assem-

blies for each application block. Generally, these comprise a main assembly that has the

same name as the block (such as Microsoft.Practices.EnterpriseLibrary.Logging.dll),

plus additional assemblies that implement specific handlers or capabilities for the block.

You only need these additional assemblies if you want to use the features they add. For

example, in the case of the Logging block, there is a separate assembly for logging to a

database (Microsoft.Practices.EnterpriseLibrary.Logging.Database.dll). If you do not

log to a database, you do not need to reference this additional assembly.

GAC or Bin, Signed or Unsigned?

All of the assemblies are provided as precompiled signed versions that you can install into

the global assembly cache (GAC) if you wish. However, if you need to run different ver-

sions of Enterprise Library assemblies side by side, this may be problematic and you may

prefer to locate them in folders close to your application.

You can then reference the compiled assemblies in your projects, which automati-

cally copies them to the bin folder. In a Web application, you can simply copy them di-

rectly to your application’s bin folder. This approach gives you simple portability and easy

installation.

Alternatively, you can install the source code for Enterprise Library and use the scripts

provided to compile unsigned versions of the assemblies. This is useful if you decide to

modify the source code to suit your own specific requirements. You can strong name and

sign the assemblies using your own credentials afterwards if required.

For more information about side-by-side operation and other deployment issues,

see the documentation installed with Enterprise Library and available online at http://

go.microsoft.com/fwlink/?LinkId=188874.

9welcome to the libr a ry

Importing Namespaces

After you reference the appropriate assemblies in your projects, you will probably want

to add using statements to your project files to simplify your code and avoid specifying

objects using the full namespace names. Start by importing the two core namespaces that

you will require in every project that uses Enterprise Library:

• Microsoft.Practices.EnterpriseLibrary.Common

• Microsoft.Practices.EnterpriseLibrary.Common.Configuration

Depending on how you decide to work with Enterprise Library in terms of instantiating

the objects it contains, you may need to import two more namespaces. We’ ll come to

this when we look at object instantiation in Enterprise Library a little later in this

chapter.

You will also need to import the namespaces for the specific application blocks you are

using. Most of the Enterprise Library assemblies contain several namespaces to organize

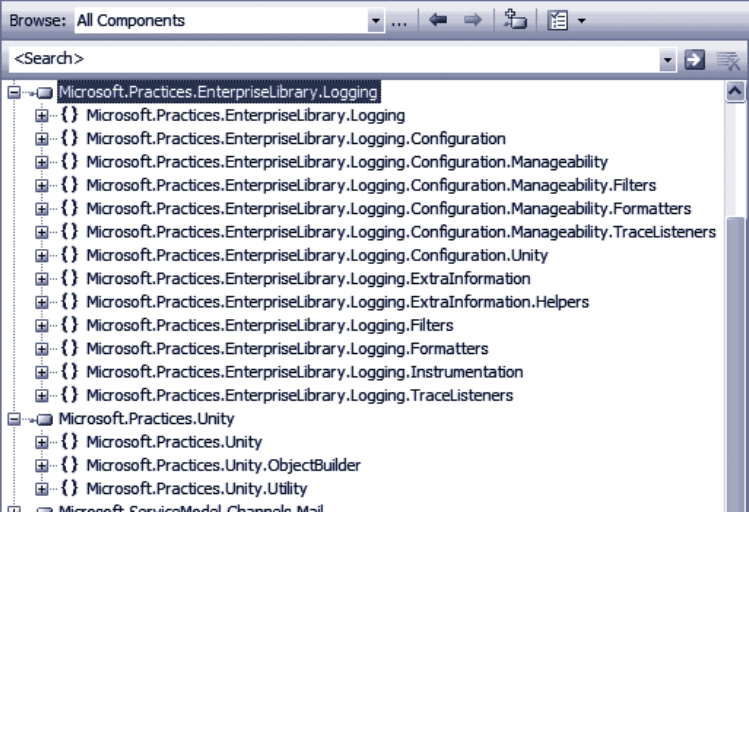

the contents. For example, as you can see in Figure 2, the main assembly for the Logging

block (one of the more complex blocks) contains a dozen subsidiary namespaces. If you

use classes from these namespaces, such as specific filters, listeners, or formatters, you

may need to import several of these namespaces.

Namespaces in the Logging block

10 ch a pter one

Configuring Enterprise Library

Before the original individual application blocks were combined into Enterprise Library,

one of the biggest challenges for users was configuration. You had to edit the sections of

the application configuration file manually, which proved to be error-prone and just plain

annoying. In Enterprise Library, you have a choice of tools for performing configuration

and a wealth of opportunities for defining and managing your configuration informa-

tion.

This flexibility comes about because Enterprise Library uses configuration sources to

expose configuration information to the application blocks and the core features of the

library. The configuration sources can read configuration from standard .NET configura-

tion files (such as App.config and Web.config), from other files, from a database (using the

example SQL Configuration Source available from http://entlib.codeplex.com), and can

also take into account Group Policy rules for a machine or a domain.

In addition, you can use the fluent interface or the .NET configuration API to create

and populate configuration sources programmatically, merge parts of your configuration

with a central shared configuration, generate merged configuration files, and generate

different configurations for individual run-time environments. For more information

about these more advanced configuration scenarios, see Appendix D, “Enterprise Library

Configuration Scenarios.”

Enterprise Library includes a stand-alone configuration console, and a configuration edi-

tor that integrates with Microsoft Visual Studio®. The stand-alone console is provided

as versions specifically aimed at the 32-bit (x86) platform and versions compiled for any

platform. For each of these platforms, there is a separate version of the console for the

3.5 and 4.0 versions of the .NET Framework. You can even copy it (and the assemblies it

uses) to a machine that does not have Enterprise Library installed if you just want to

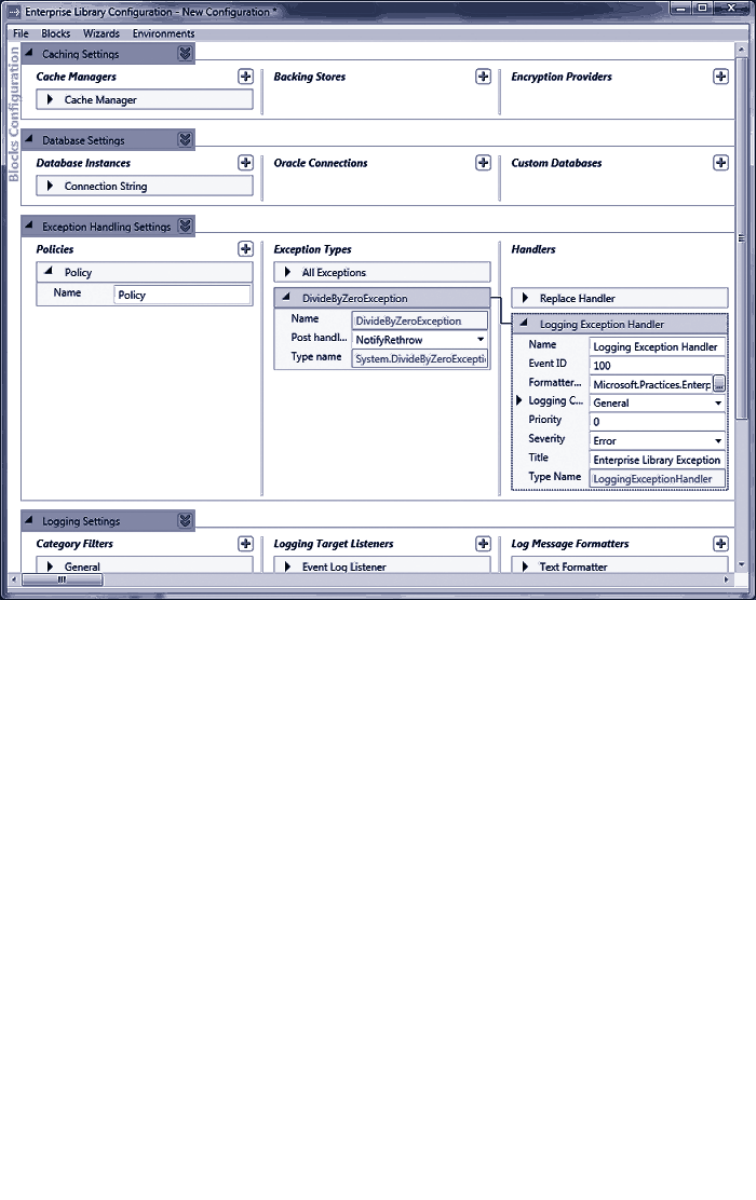

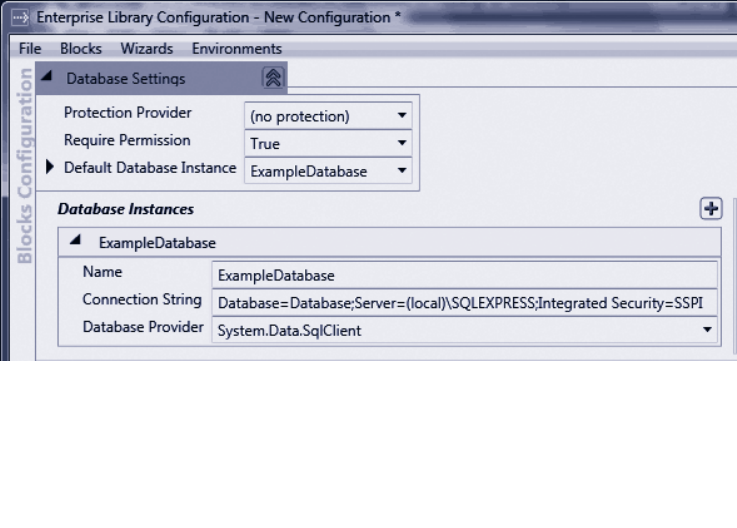

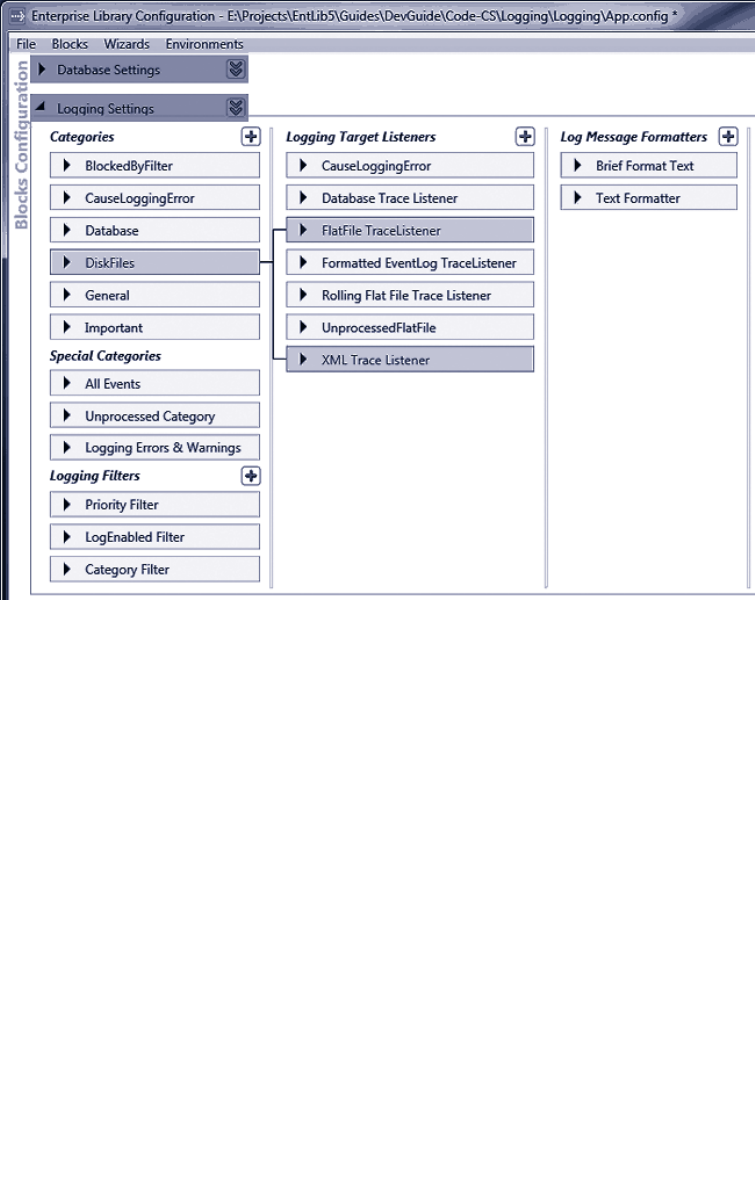

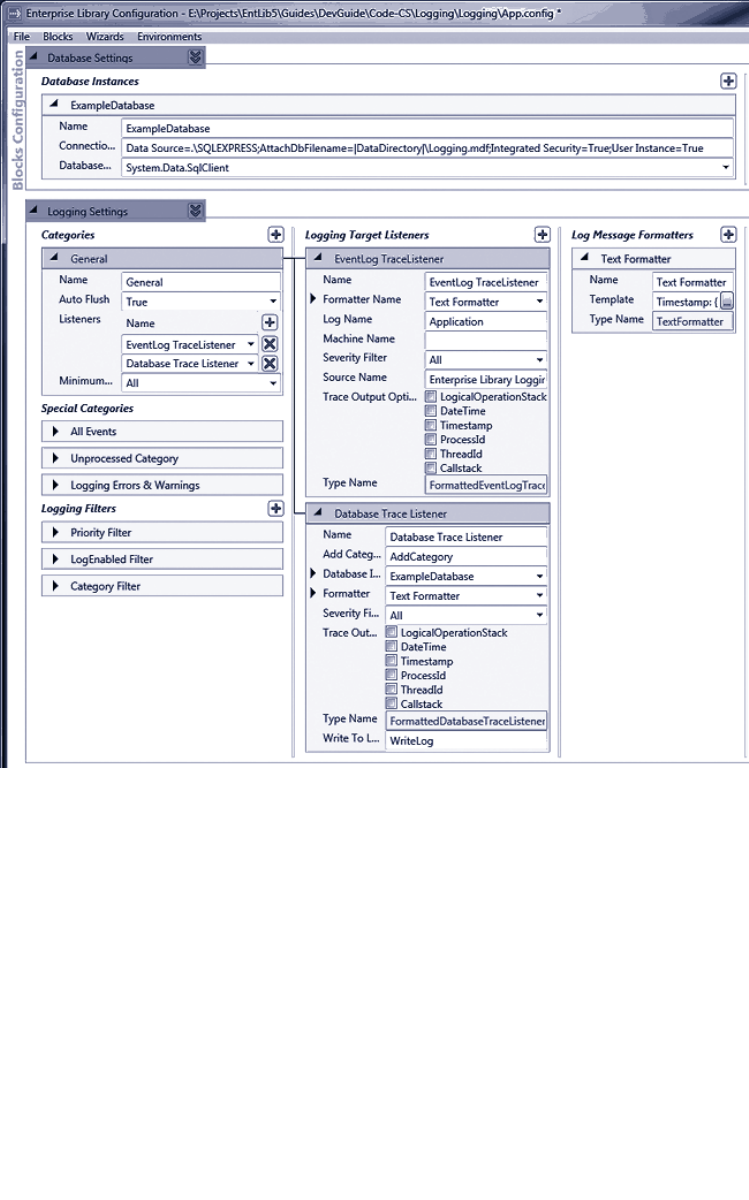

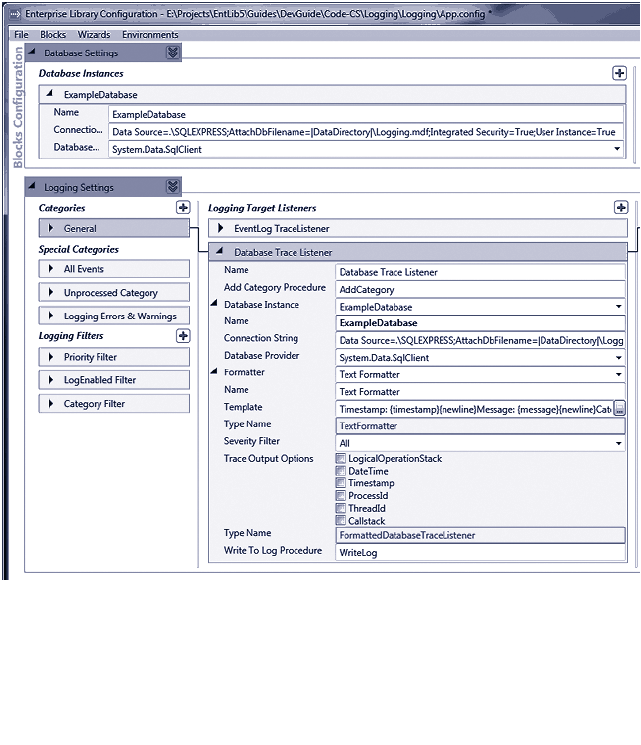

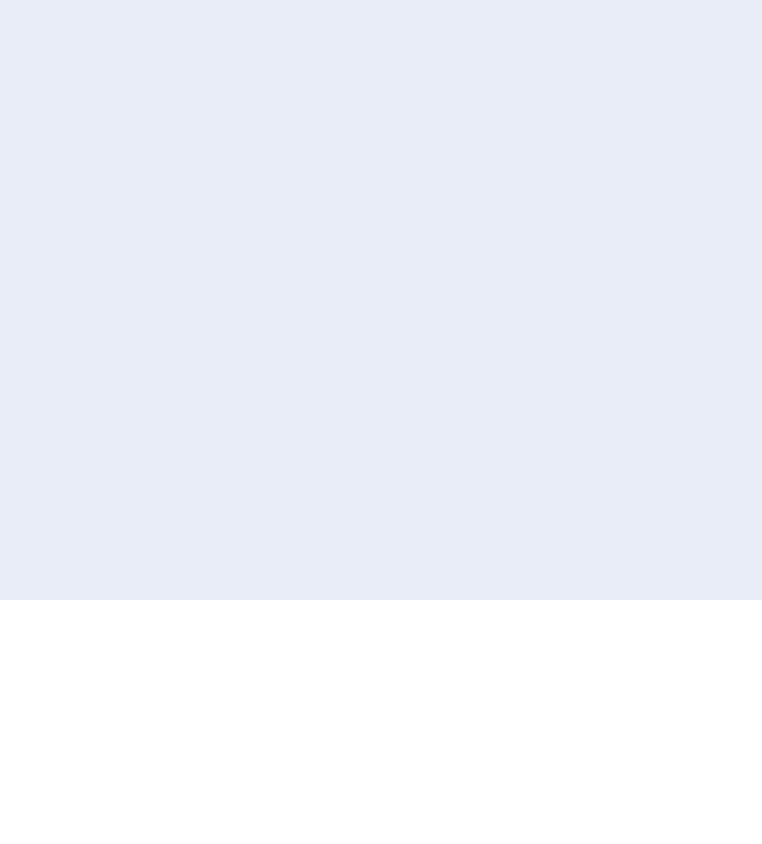

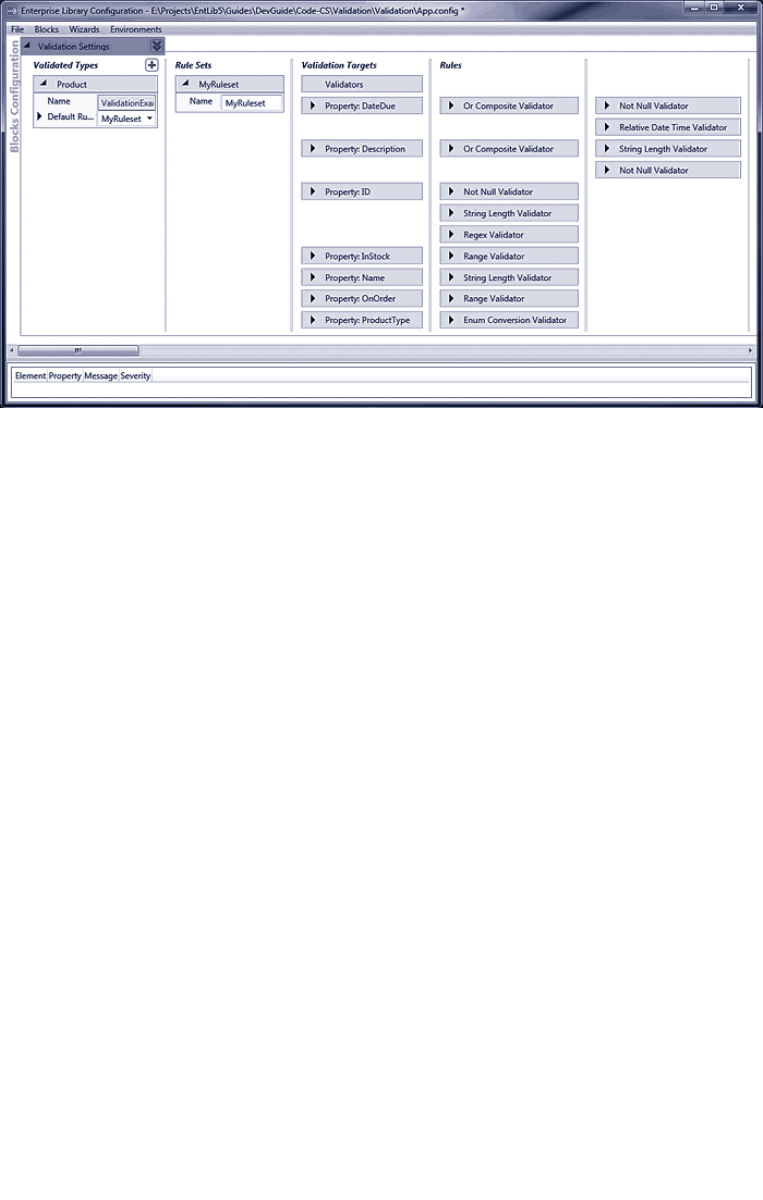

perform post-deployment configuration and system administration. Figure 3 shows the

configuration console with some of the application blocks covered in this book installed

into the configuration.

11welcome to the libr a ry

The Enterprise Library configuration console

The Visual Studio configuration editor displays an interface very similar to that shown in

Figure 3, but allows you to edit your configuration files with a simple right-click in Solu-

tion Explorer.

The most common scenario for basic configuration of an application is to store the con-

figuration information in a local configuration file (such as Web.config or App.config). You

can create a new Enterprise Library configuration in the configuration console and then

save it to disk, or you can open an existing configuration file and edit it to add Enterprise

Library to your application.

Even if you use the more advanced approaches described in Appendix D, “Enterprise

Library Configuration Scenarios,” the techniques for defining your Enterprise Library

configuration are basically the same. The general procedure for configuring an application

is as follows:

12 ch a pter one

1. Open the stand-alone configuration tool from your Start menu, or right-

click on a configuration file in Visual Studio Solution Explorer and click Edit

Enterprise Library V5 Configuration.

2. Click the Blocks menu and select the block you want to add to the configura-

tion. This adds the block with the default settings.

• If you want to use the configuration console to edit values in

the <appSettings> section of your configuration file, select Add

Application Settings.

• If you want to enable instrumentation for Enterprise Library,

select Add Instrumentation Settings.

• If you want to use an alternative source for your configuration, such

as a custom XML file, select Add Configuration Settings.

3. To view the configuration settings for each section, block, or provider, click

the right-facing arrow next to the name of that section, block, or provider.

Click it again, or press the Spacebar key, to collapse this section.

4. To view the properties pane for each main configuration section, click the

downward-facing double arrow. Click it again to close the properties pane.

5. To add a provider to a block, depending on the block or the type of provider,

you either right-click the section in the left column and select the appropriate

Add item on the shortcut menu, or click the plus-sign icon in the appropriate

column of the configuration tool. For example, to add a new exception type to

a policy in the Exception Handling block, right-click the Policy item and click

Add Exception Type.

When you rename items, the heading of that item changes to match the name. For

example, if you renamed the default Policy item in the Exception Handling block,

the item will show the new name instead of “Policy.”

1. Edit the properties of the section, block, or provider using the controls in that

section for that block. You will see information about the settings required,

and what they do, in the subsequent chapters of this guide. For full details of

all of the settings that you can specify, see the documentation installed with

Enterprise Library for that block.

2. To delete a section or provider, right-click the section or provider and click

Delete on the shortcut menu. To change the order of providers when more

than one is configured for a block, right-click the section or provider and click

the Move Up or Move Down command on the shortcut menu.

13w elcome to the libr a ry

3. To set the default provider for a block, such as the default Database for the

Data Access block, click the down-pointing double arrow icon next to the

block name and select the default provider name from the drop-down list.

In this section you can also specify the type of provider used to encrypt this

section, and whether the block should demand full permissions.

For more details about encrypting configuration, see the next section of this chapter.

For information about running the block in partial trust environments, which requires

you to turn off the Require Permission setting, see the documentation installed with

Enterprise Library.

4. To use a wizard to simplify configuration for a common task, such as configur-

ing logging to a database, open the Wizards menu and select the one you

require. The wizard will display a series of dialogs that guide you through

setting the required configuration.

5. If you want to configure different settings for an application based on different

deployment scenarios or environments, open the Environments menu and click

New Environment. This adds a drop-down list, Overrides on Environment, to

each section. If you select Override Properties in this list, you can specify the

settings for each new environment that you add to the configuration. This

feature is useful if you have multiple environments that share the same basic

configuration but require different property settings. It allows you to create a

base configuration file (.config) and an environment delta file that contains

the differences (.dconfig). See Appendix D, “Enterprise Library Configuration

Scenarios” for information on configuring and using multiple environments.

6. As you edit the configuration, the lower section of the tool displays any

warnings or errors in your configuration. You must resolve all errors before you

can save the configuration.

7. When you have finished configuring your application, use the commands on the

File menu to save it as a file in your application folder with the appropriate

name; for example, use Web.config for a Web application and App.config for

a Windows Forms application.

You can, of course, edit the configuration files using a text or XML editor, but this is

likely to be a more tedious process compared to using the configuration console. How-

ever, it may be a useful approach for minor changes to the configuration when the

application is running on a server where the configuration console is not installed.

Enterprise Library also contains an XML configuration schema that you can use to enable

IntelliSense® and simplify hand editing of the configuration files.

To enable the Enterprise Library XML schema in Visual Studio, open the configuration

file, open the XML menu, and click Schemas. In the XML Schemas dialog, locate

the Enterprise Library schema and change the value in the Use column to Use this

schema. Then click OK.

14 ch a pter one

Probably the most common approach for storing configuration information for your

applications that use Enterprise Library is to use an App.config or Web.config file stored

in the root folder of your application. That’s fine, but you may be concerned that anyone

who happens to stroll past the server (either physically, or virtually over the Internet) will

be able to open the file and see sensitive details. These might include connection strings

for the Data Access block, validation rules for the Validation block, or connection infor-

mation used by the Logging block to communicate with Windows Message Queuing.

While in theory, you will protect your configuration files by physically securing the

server and not leaving it running under a logged-on administrator account, you can (and

probably should) add an extra layer of protection by encrypting sections of your configu-

ration files. The configuration tools can do this for you automatically; all you need to do

is set the ProtectionProvider property of the specific block or configuration section that

you want to encrypt. For more information, see Appendix E, “Encrypting Configuration

Files.”

Instantiating and Using Enterprise Library Objects

After you have referenced the assemblies you need, imported the required namespaces,

and configured your application, you can start to think about creating instances of the

Enterprise Library objects you want to use in your applications. As you will see in each of

the following chapters, the Enterprise Library application blocks are optimized for use as

loosely coupled components in almost any type of application. In addition, the change in

this release to using a dependency injection container to generate instances of Enterprise

Library objects means that you can realize the benefits of contemporary design patterns

and solution architectures more easily.

By default, Enterprise Library uses the Unity dependency injection mechanism, which is

provided as part of Enterprise Library. However, it’s possible to configure Enterprise

Library to use any dependency injection container—or other underlying mechanism—

that exposes the required configuration information though an implementation of the

IServiceLocator interface. See Appendix B, “Dependency Injection in Enterprise

Library,” and http://commonservicelocator.codeplex.com for more information.

In Appendix A, “Dependency Injection with Unity,” we take a more in-depth look at what

a dependency injection container actually is, and how it can assist you in applying design

patterns that follow the dependency inversion principle (DIP); in particular, how the

Dependency Injection (DI) pattern can help you to create more decoupled applications

that are easier to build, test, and maintain. However, you don’t need to understand this or

learn about DI to be able to use Enterprise Library. You can create instances of Enterprise

Library objects easily and quickly with a single line of code.

15welcome to the libr a ry

, ,

Each of the application blocks in Enterprise Library contains one or more core objects

that you typically use to access the functionality of that block. An example is the

Exception Handling Application Block, which provides a facade named Exception

Manager that exposes the methods you use to pass exceptions to the block for handling.

The following table lists the commonly used objects for each block.

Application Block Non-static Instance or Factory

Caching ICacheManager

Cryptography CryptographyManager

Data Access Database

Exception Handling ExceptionManager

Logging LogWriter

TraceManager

Security ISecurityCacheProvider

IAuthorizationProvider

Validation ValidatorFactory

ConfigurationValidatorFactory

AttributeValidatorFactory

ValidationAttributeValidatorFactory

There are also task-specific objects in some blocks that you can create directly in your

code in the traditional way using the new operator. For example, you can create

individual validators from the Validation Application Block, or log entries from the

Logging Application Block. We show how to do this in the examples for each applica-

tion block chapter.

To use the features of an application block, all you need to do is create an instance of the

appropriate object, facade, or factory listed in the table above and then call its methods.

The behavior of the block is controlled by the configuration you specified, and often you

can carry out tasks such as exception handling, logging, caching, and encrypting values

with just a single line of code. Even tasks such as accessing data or validating instances of

your custom types require only a few lines of simple code. So, let’s look at how you create

instances of the Enterprise Library objects you want to use.

In this release of Enterprise Library, there are two recommended approaches to creating

instances of the Enterprise Library objects. The decision as to which you use is based

solely on the way you decide to architect your application. You can use the simple ap-

proach of obtaining instances using the Enterprise Library service locator, which provides

access to the Unity container that holds the Enterprise Library configuration information.

Alternatively, if you are already a DI convert, you can take charge of the entire process by

creating and populating a container and using it to create and manage both Enterprise

Library objects and your own custom types. We’ll look at both approaches next.

16 ch a pter one

The Simple Approach — Using the Enterprise Library Service Locator

When you initially create an instance of an Enterprise Library type in your application

code, the underlying mechanism reads your configuration information into a container

and exposes it to your code through a service locator that is initialized as part of the En-

terprise Library configuration mechanism. This service locator provides methods that you

can call at any point in your application code to obtain configured instances of any Enter-

prise Library type.

For example, if you are using the Logging Application Block, you can obtain a refer-

ence to a LogWriter using a single line of code, and then call its Write method to write

your log entry to the configured targets, as shown here.

var writer = EnterpriseLibraryContainer.Current.GetInstance<LogWriter>();

writer.Write("I'm a log entry created by the Logging block!");

Notice that this code uses type inference through the var keyword. The variable will

assume the type returned by the assignment; this technique can make your code more

maintainable.

If you configured more than one instance of a type for a block, such as more than one

Database for the Data Access Application Block, you can specify the name when you call

the GetInstance method. For example, you may configure an Enterprise Library Database

instance named Customers that specifies a Microsoft SQL Server® database, and a sepa-

rate Database instance named Products that specifies another type of database. In this

case, you specify the name of the object you want to resolve when you call the Get

Instance method, as shown here.

var customerDb

= EnterpriseLibraryContainer.Current.GetInstance<Database>("Customers");

You don’t have to initialize the block, read configuration information, or do anything

other than call the methods of the service locator. For many application scenarios, this

simple approach is ideal for obtaining instances of the Enterprise Library types you want

to use.

The Sophisticated Approach — Accessing the Container Directly

If you want to take advantage of design patterns such as Dependency Injection and Inver-

sion of Control in your application, you will probably already be considering the use of a

dependency injection mechanism to decouple your components and layers, and to resolve

types. If this is the case, the more sophisticated approach to incorporating Enterprise

Library into your applications will fit well with your solution architecture.

Instead of allowing Enterprise Library to create, populate, and expose a default con-

tainer that holds just Enterprise Library configuration information, you can create the

container and populate it yourself—and hold onto a reference to the container for use in

your application code. This not only allows you to obtain instances of Enterprise Library

objects, it also lets you use the container to implement dependency injection for your

own custom types. Effectively, the container itself becomes your service locator.

17welcome to the libr a ry

For example, you can create registrations and mappings in the container that specify

features such as the dependencies between the components of your application, map-

pings between types, the values of parameters and properties, interception for methods,

and deferred object creation.

You may be thinking that all of these wondrous capabilities will require a great deal

of code and effort to achieve; however, they don’t. To initialize and populate the default

Unity container with the Enterprise Library configuration information and make it avail-

able to your application, only a single line of code is required. It is shown here:

var theContainer = new UnityContainer()

.AddNewExtension<EnterpriseLibraryCoreExtension>();

Now that you have a reference to the container, you can obtain an instance of any Enter-

prise Library type by calling the container methods directly. For example, if you are using

the Logging Application Block, you can obtain a reference to a LogWriter using a single

line of code, and then call its Write method to write your log entry to the configured

targets.

var writer = theContainer.Resolve<LogWriter>();

writer.Write("I'm a log entry created by the Logging block!");

And if you configured more than one instance of a type for a block, such as more than

one database for the Data Access Application Block, you can specify the name when you

call the Resolve method, as shown here:

var customerDb = theContainer.Resolve<Database>("Customers");

You may have noticed the similarity in syntax between the Resolve method and the

GetInstance method we used earlier. Effectively, when you are using the default Unity

container, the GetInstance method of the service locator simply calls the Resolve

method of the Unity container. It therefore makes sense that the syntax and parameters

are similar. Both the container and the service locator expose other methods that allow

you to get collections of objects, and there are both generic and non-generic overloads

that allow you to use the methods in languages that do not support generics.

One point to note if you choose this more sophisticated approach to using Enterprise

Library in your applications is that you should import two additional namespaces into

your code. These namespaces include the container and core extension definitions:

• Microsoft.Practices.EnterpriseLibrary.Common.Configuration.Unity

• Microsoft.Practices.Unity

18 ch a pter one

Pros and Cons of Object Instantiation

If you haven’t already decided which approach to follow for creating Enterprise Library

objects, the following table will help you to understand the advantages and disadvan-

tages of each one.

Object instantiation

technique

Advantages Considerations

Using the

Enterprise Library

service locator

Requires no initialization code. The

service locator is made available

automatically.

You can resolve types anywhere in

your application code. You don’t need

to hold onto a reference to the

container.

You can only resolve Enterprise

Library types (as interfaces, abstract

types, or concrete types that are

registered automatically).

You cannot manipulate, or add

registrations or mappings to the

container.

Using the container

as the service

locator

You can directly access all the

functionality of the Unity container.

You can iterate over the contents and

read or manipulate the registrations

and mappings (though you should not

attempt to change the Enterprise

Library configuration information).

You can add and remove your own

registrations and mappings, allowing

you to take full advantage of DI

techniques.

Requires initialization, though this

is simply one line of code executed

at application startup, or simple

configuration settings, when you

use the default Unity container.

Request-based applications such

as ASP.NET and Web services

require additional code to store the

container reference and resolve

the dependencies of the request

class (such as the Page).

One of the prime advantages of the more sophisticated approach of accessing the con-

tainer directly is that you can use it to resolve dependencies of your own custom types.

For example, assume you have a class named TaxCalculator that needs to perform logging

and implement a consistent policy for handling exceptions that you apply across your

entire application. Your class will contain a constructor that accepts an instance of an

ExceptionManager and a LogWriter as dependencies.

public class TaxCalculator

{

private ExceptionManager _exceptionManager;

private LogWriter _logWriter;

public TaxCalculator(ExceptionManager em, LogWriter lw)

{

this._exceptionManager = em;

this._logWriter = lw;

}

...

}

19welcom e to the libr a ry

If you use the Enterprise Library service locator approach, you could simply obtain these

instances within the class constructor or methods when required, rather than passing

them in as parameters. However, a more commonly used approach is to generate and reuse

the instances in your main application code, and pass them to the TaxCalculator when

you create an instance.

var exManager

= EnterpriseLibraryContainer.Current.GetInstance<ExceptionManager>();

var writer

= EnterpriseLibraryContainer.Current.GetInstance<LogWriter>();

TaxCalculator calc = new TaxCalculator(exManager, writer);

Alternatively, if you have created and held a reference to the container, you just need to

resolve the TaxCalculator type through the container. Unity will instantiate the type,

examine the constructor parameters, and automatically inject instances of the Exception-

Manager and a LogWriter into them. It returns your new TaxCalculator instance with

all of the dependencies populated.

TaxCalculator calc = theContainer.Resolve<TaxCalculator>();

More Reasons to be Sophisticated

It is clear from the preceding examples that managing the container yourself offers con-

siderable advantages in all but the simplest applications or scenarios. And the example

you’ve seen for using dependency injection only scratches the surface of what you can do

using the more sophisticated approach. For example, if you have a reference to the con-

tainer, you can:

• Manage the lifetime of your custom types. They can be resolved by the con-

tainer as singletons, with a lifetime based on the lifetime of the object that

created them, or as a new instance per execution thread.

• Implement patterns such as plug-in and service locator by mapping interfaces

and abstract types to concrete implementations of your custom types.

• Defer creation of the resolved custom type until it is actually required.

• Specify dependencies and values for parameters and properties of the resolved

instances of your custom types.

• Apply interception to your custom types to modify their behavior, implement

management of crosscutting concerns, or add additional functionality.

• Set up hierarchies of dependencies that are automatically populated to achieve

maximum decoupling between components, assist in debugging, simplify

testing, and reduce maintenance cost and effort.

When you use the default Unity container, you have a powerful general-purpose depen-

dency injection mechanism in your arsenal. You can define and modify registrations and

mappings in the container programmatically at run time, or you can define them using

configuration files. Appendix A, “Dependency Injection with Unity,” contains more infor-

mation about using Unity.

20 ch a pter one

To give you a sense of how easy it is to use, the following code registers a mapping

between an interface named IMyService and a concrete type named CustomerService,

specifying that it should be a singleton.

theContainer.RegisterType<IMyService, CustomerService>(

new ContainerControlledLifetimeManager());

Then you can resolve the single instance of the concrete type using the following code.

IMyService myServiceInstance = theContainer.Resolve<IMyService>();

This returns an instance of the CustomerService type, though you can change the actual

type returned at run time by changing the mapping in the container. Alternatively, you can

create multiple registrations or mappings for an interface or base class with different

names and specify the name when you resolve the type.

Unity can also read its configuration from your application’s App.config or Web.

config file (or any other configuration file). This means that you can use the sophisticated

approach to creating Enterprise Library objects and your own custom types, while being

able to change the behavior of your application just by editing the configuration file.

If you want to load type registrations and mappings into a Unity container from a

configuration file, you must add the assembly Microsoft.Practices.Unity.Configuration.

dll to your project, and optionally import the namespace Microsoft.Practices.Unity.

Configuration into your code. This assembly and namespace contains the extension to

the Unity container for loading configuration information.

For example, the following extract from a configuration file initializes the container and

adds the same custom mapping to it as the RegisterType example shown above.

<unity>

<alias alias="CoreExtension"

type="Microsoft.Practices.EnterpriseLibrary.Common.Conguration

.Unity.EnterpriseLibraryCoreExtension,

Microsoft.Practices.EnterpriseLibrary.Common" />

<namespace name="Your.Custom.Types.Namespace" />

<assembly name="Your.Custom.Types.Assembly.Name" />

<container>

<extension type="CoreExtension" />

<register type="IMyService" mapTo="CustomerService">

<lifetime type="singleton" />

</register>

</container>

</unity>

21welcome to the libr a ry

Then, all you need to do is load this configuration into a new Unity container. This requires

just one line of code, as shown here.

var theContainer = new UnityContainer().LoadConguration();

Other techniques we demonstrate in Appendix A, “Dependency Injection with Unity,”

include using attributes to register type mappings and dependencies, defining named

registrations, and specifying dependencies and values for parameters and properties.

The one point to be aware of when you use the more sophisticated technique for creating

objects is that your application is responsible for managing the container, holding a refer-

ence to it, and making that reference available to code that must access the container. In

forms-based applications that automatically maintain global state (for example, applica-

tions built using technologies such as Windows Forms, Windows Presentation Foundation

(WPF), and Silverlight®), you can use an application-wide variable for this.

However, in request-based applications built using technologies such as ASP.NET,

ASMX, and Windows Communication Foundation (WCF), you generally require addi-

tional code to maintain the container and make it available for each request. We discuss

some of the ways that you can achieve this in Appendix B, “Dependency Injection in En-

terprise Library,” and you will find full details in the documentation installed with Enter-

prise Library and available online at http://go.microsoft.com/fwlink/?LinkId=188874.

If you have used versions of Enterprise Library prior to version 5.0, you may be more

familiar with the previous approach to creating objects within your application code.

Earlier versions generally supported or recommended the use of a series of static facades.

While these facades are still supported in version 5.0 for backward compatibility with

existing applications, they are no longer the recommended approach and may be depre-

cated in future releases.

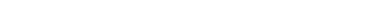

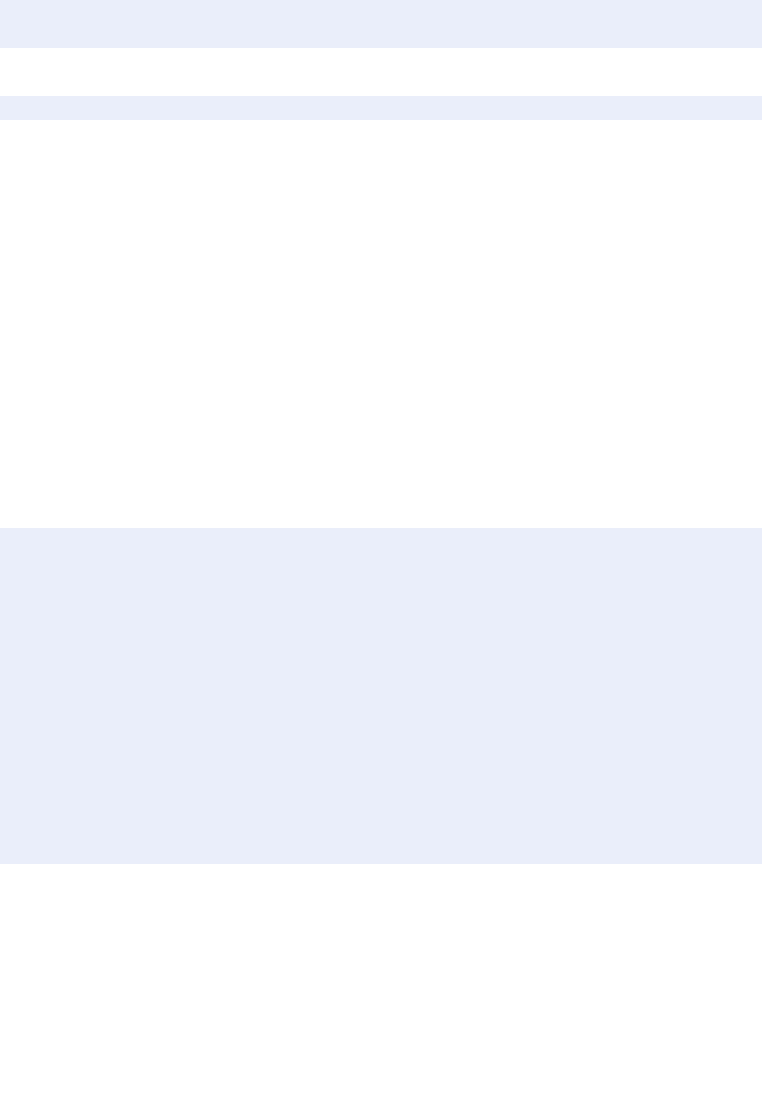

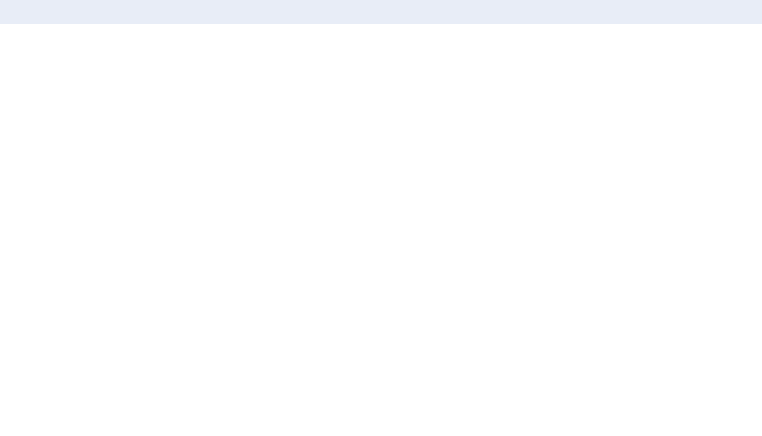

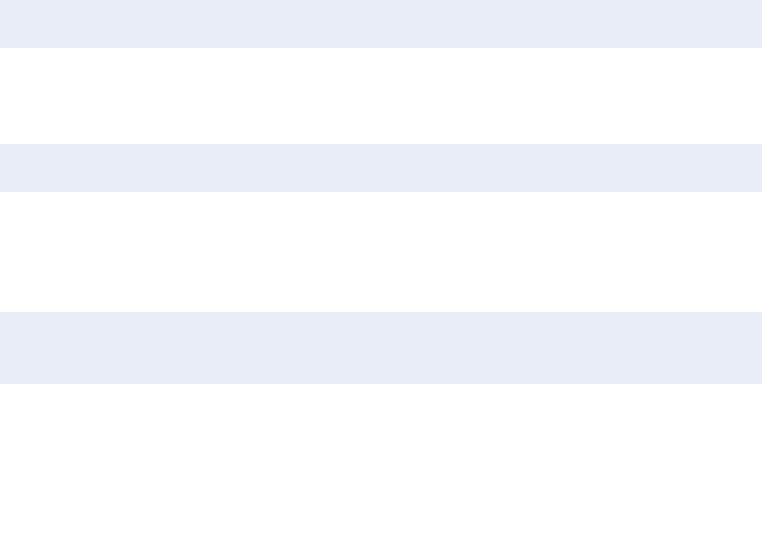

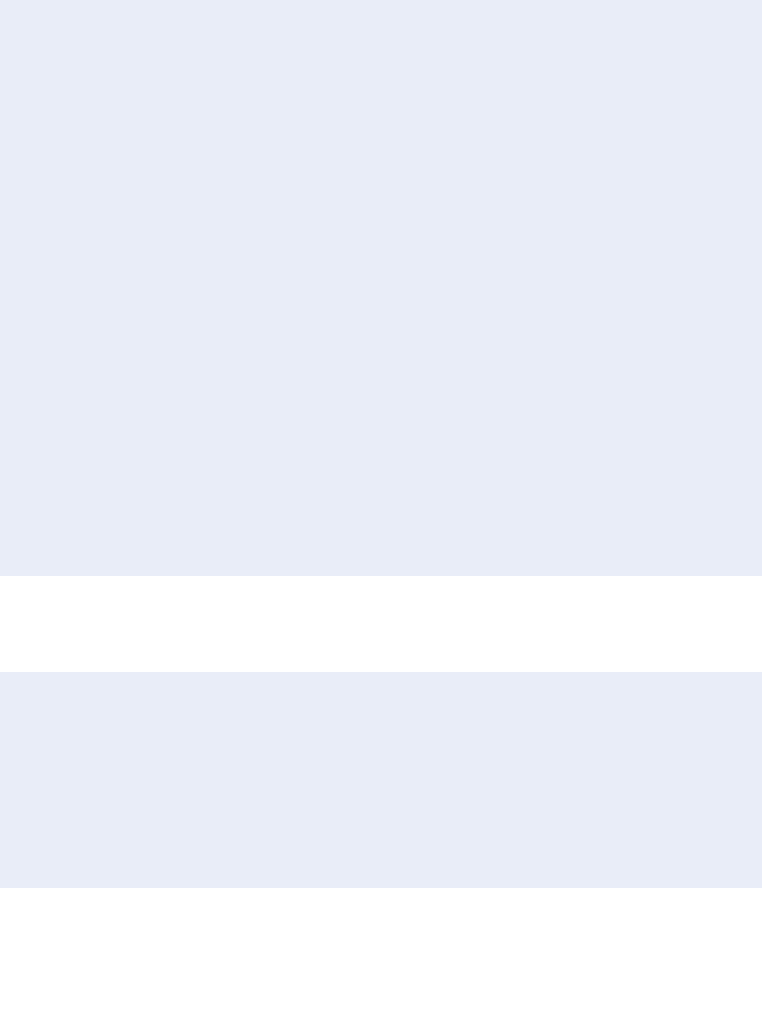

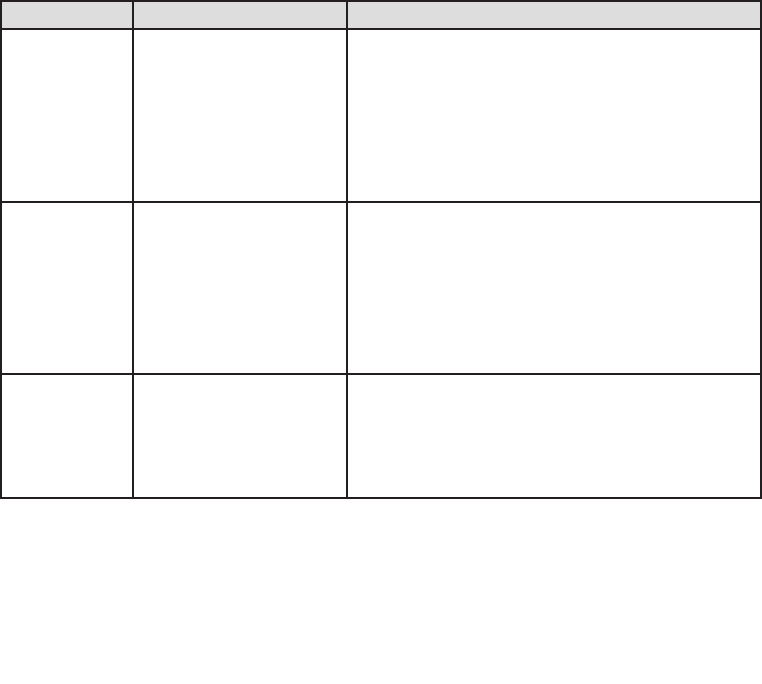

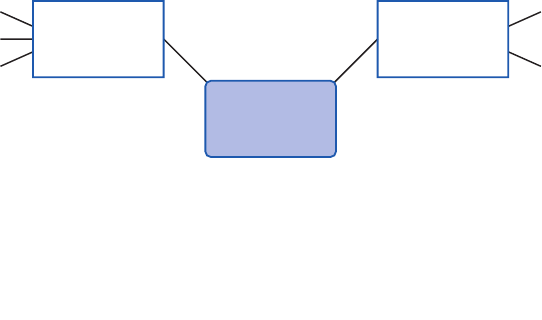

Figure 5 summarizes all the approaches you can use to get access to the features of

Enterprise Library. 1 and 2 are the recommended approaches for Enterprise Library 5.0; 3

and 4 are still supported to make it easier to upgrade your existing applications that use

a previous version of Enterprise Library.

22 ch a pter one

Four ways, one library

The Example Applications

To help you understand how you can use Enterprise Library and each of the seven applica-

tion blocks covered in this guide, we provide a series of simple example applications that

you can run and examine. Each is a console-based application and, in most cases, all of

the relevant code that uses Enterprise Library is found within a series of routines in the

Program.cs file. This makes it easy to see how the different blocks work, and what you

can achieve with each one.

The examples use the simplest approach (the service locator and GetInstance meth-

od described earlier in the chapter) for creating the Enterprise Library objects they require,

and have the configuration information for the blocks they use stored in the App.config

file. Each of the options in the examples exercises specific features of the relevant block

and displays the results. You can open the solutions for these examples in Visual Studio,

or just run the executable file in the bin\debug folder and view the source files in a text

editor if you prefer.

To obtain the example applications, go to

http://go.microsoft.com/fwlink/?LinkId=189009.

var myLogger =

EnterpriseLibraryContainer.Current.GetInstance<LogWriter>( );

myLogger.Write(…);

Configuration

Instance Factory

Service Locator Static Facade

Logger.Write(…);

var factory = new LogWriterFactory( );

var myLogger = factory.Create( );

myLogger.Write(…);

var myLogger = Container.Resolve<LogWriter>( );

myLogger.Write(…);

1

3

4

Container

2

23w elcome to the libr ary

Summary

This brief introduction to Enterprise Library will help you to get started if you are not

familiar with its capabilities and the basics of using it in applications. This chapter de-

scribed what Enterprise Library is, where you can get it, and how it can make it much

easier to manage your crosscutting concerns. This book concentrates on the application

blocks in Enterprise Library that “do stuff” (as opposed to those that “wire up stuff”). The

blocks we concentrate on in this book include the Caching, Cryptography, Data Access,

Exception Handling, Logging, Security, and Validation Application Blocks.

The aim of this chapter was also to help you get started with Enterprise Library by

explaining how you deploy and reference the assemblies it contains, how you configure

your applications to use Enterprise Library, how you instantiate Enterprise Library objects,

and the example applications we provide. Some of the more advanced features and con-

figuration options were omitted so that you may concentrate on the fundamental require-

ments. However, each appendix in this guide provides more detailed information, while

Enterprise Library contains substantial reference documentation, samples, and other re-

sources that will guide you as you explore these more advanced features.

25

Introduction

When did you last write an enterprise-level application where you didn’t need to handle

data? And when you were handling data there was a good chance it came from some kind

of relational database. Working with databases is the single most common task most

enterprise applications need to accomplish, so it’s no surprise that the Data Access Ap-

plication Block is the most widely used of all of the Enterprise Library blocks—and no

coincidence that we decided to cover it in the first of the application block chapters in

this book.

A great many of the millions of Enterprise Library users around the world first cut

their teeth on the Data Access block. Why? Because it makes it easy to implement the

most commonly used data access operations without needing to write the same repetitive

code over and over again, and without having to worry about which database the applica-

tion will target. As long as there is a Data Access block provider available for your target

database, you can use the same code to access the data. You don’t need to worry about

the syntax for parameters, the idiosyncrasies of the individual data access methods, or the

different data types that are returned.

This means that it’s also easy to switch your application to use a different database,

without having to rewrite code, recompile, and redeploy. Administrators and operators

can change the target database to a different server; and even to a different database

(such as moving from Oracle to Microsoft® SQL Server® or the reverse), without affect-

ing the application code. In the current release, the Data Access Application Block con-

tains providers for SQL Server, SQL Server Compact Edition, and Oracle databases. There

are also third-party providers available for the IBM DB2, MySql, Oracle (ODP.NET), Post-

greSQL, and SQLite databases. For more information on these, see http://codeplex.com/

entlibcontrib.

2Much ADO about Data Access

26 ch a pter t wo

What Does the Data Access Application Block Do?

The Data Access Application Block abstracts the actual database you are using, and

exposes a series of methods that make it easy to access that database to perform common

tasks. It is designed to simplify the task of calling stored procedures, but also provides full

support for the use of parameterized SQL statements. As an example of how easy the

block is to use, when you want to fill a DataSet you simply create an instance of the

appropriate Database class, use it to get an appropriate command instance (such as

DbCommand), and pass this to the ExecuteDataSet method of the Database class. You

don’t need to create a DataAdapter or call the Fill method. The ExecuteDataSet method

manages the connection, and carries out all the tasks required to populate your DataSet.

In a similar way, the Database class allows you to obtain a DataReader, execute

commands directly, and update the database from a DataSet. The block also supports

transactions to help you manage multiple operations that can be rolled back if an error

occurs.

In addition to the more common approaches familiar to users of ADO.NET, the Data

Access block also provides techniques for asynchronous data access for databases that

support this feature, and provides the ability to return data as a sequence of objects

suitable for client-side querying using techniques such as Language Integrated Query

(LINQ). However, the block is not intended to be an Object/Relational Mapping (O/RM)

solution. It uses mappings to relate parameters and relational data with the properties of

objects, but does not implement an O/RM modeling solution.

The major advantage of using the Data Access block, besides the simplicity achieved

through the encapsulation of the boilerplate code that you would otherwise need to

write, is that it provides a way to create provider-independent applications that can

easily be moved to use a different source database type. In most cases, unless your code

takes advantage of methods specific to a particular database, the only change required is

to update the contents of your configuration file with the appropriate connection string.

You don’t have to change the way you specify queries (such as SQL statements or stored

procedure names), create and populate parameters, or handle return values. This also

means reduced requirements for testing, and the configuration changes can even be

accomplished through Group Policy.

The following table lists by task the most commonly used methods that the Data Access

Application Block exposes to retrieve and update data. Some of the method names will

be familiar to those used to using ADO.NET directly.

27much a do about data access

Task Methods

Filling a DataSet and

updating the database from

a DataSet.

ExecuteDataSet. Creates, populates, and returns a DataSet.

LoadDataSet. Populates an existing DataSet.

UpdateDataSet. Updates the database using an existing DataSet.

Reading multiple data rows. ExecuteReader. Creates and returns a provider-independent

DbDataReader instance.

Executing a Command. ExecuteNonQuery. Executes the command and returns the number of

rows affected. Other return values (if any) appear as output parameters.

ExecuteScalar. Executes the command and returns a single value.

Retrieving data as a

sequence of objects.

ExecuteSprocAccessor. Returns data selected by a stored procedure

as a sequence of objects for client-side querying.

ExecuteSqlStringAccessor. Returns data selected by a SQL statement

as a sequence of objects for client-side querying.

Retrieving XML data (SQL

Server only).

ExecuteXmlReader. Returns data as a series of XML elements

exposed through an XmlReader. Note that this method is specific

to the SqlDatabase class (not the underlying Database class).

Creating a Command. GetStoredProcCommand. Returns a command object suitable for

executing a stored procedure.

GetSqlStringCommand. Returns a command object suitable for

executing a SQL statement (which may contain parameters).

Working with Command

parameters.

AddInParameter. Creates a new input parameter and adds it to

the parameter collection of a Command.

AddOutParameter. Creates a new output parameter and adds it

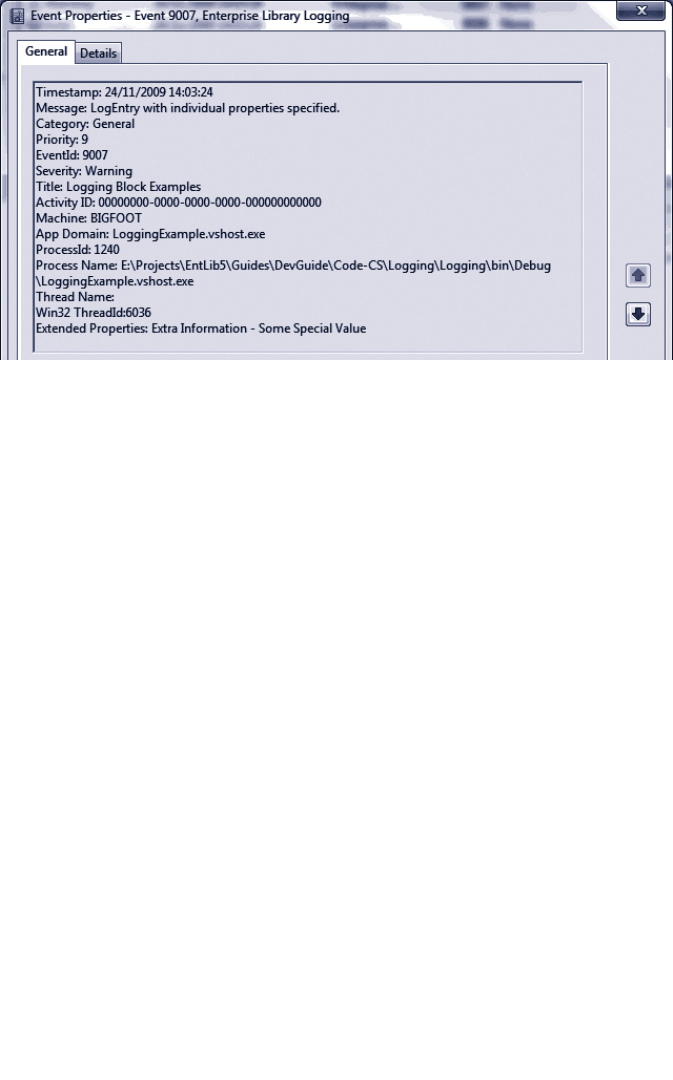

to the parameter collection of a command.