Application Networks Anypoint Platform Architecture APAApp Net Student Manual 02may2018

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 243 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Application Networks - Anypoint Platform Architecture

- Table of Contents

- Welcome To Anypoint Platform Architecture: Application Networks

- Module 1. Putting the Course in Context

- Module 2. Introducing MuleSoft, the Application Network Vision and Anypoint Platform

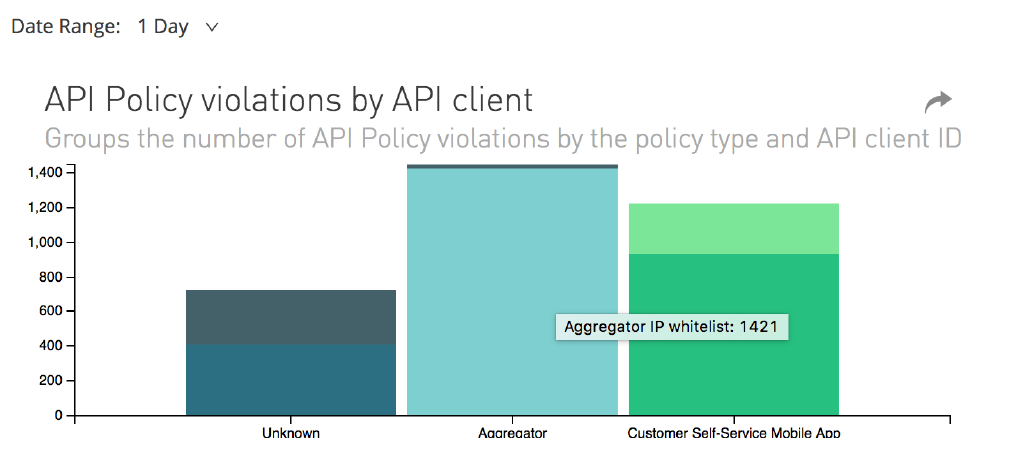

- Module 3. Establishing Organizational and Platform Foundations

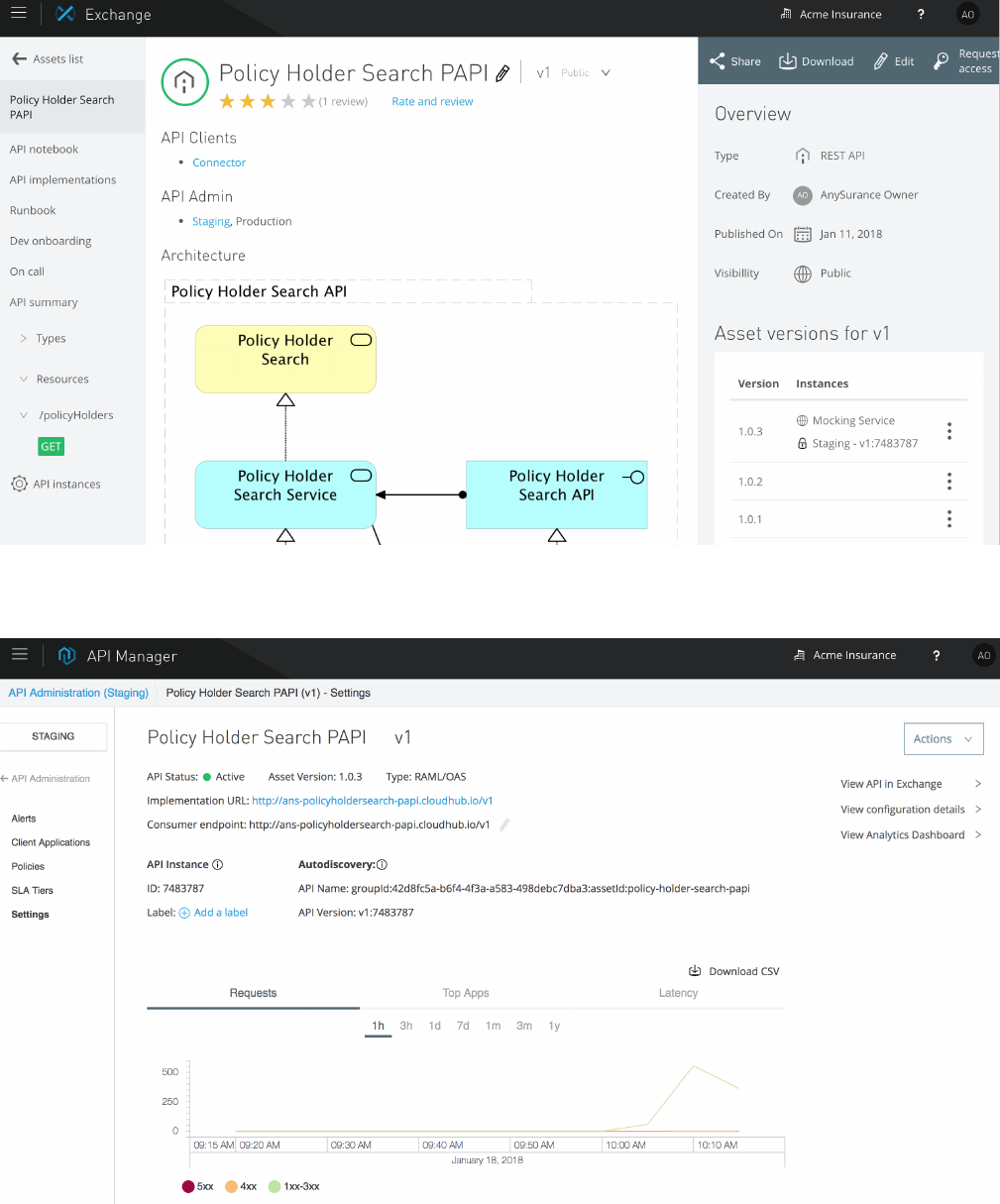

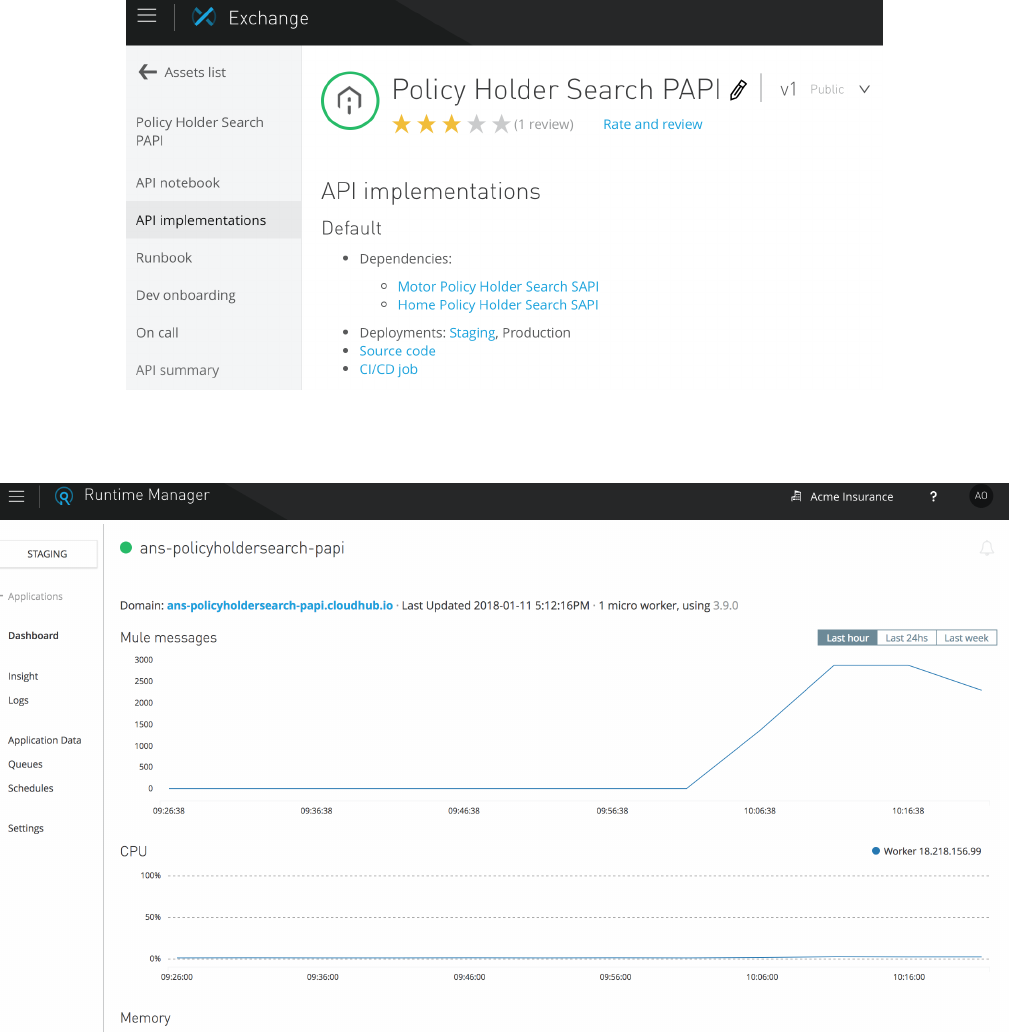

- Module 4. Identifying, Reusing and Publishing APIs

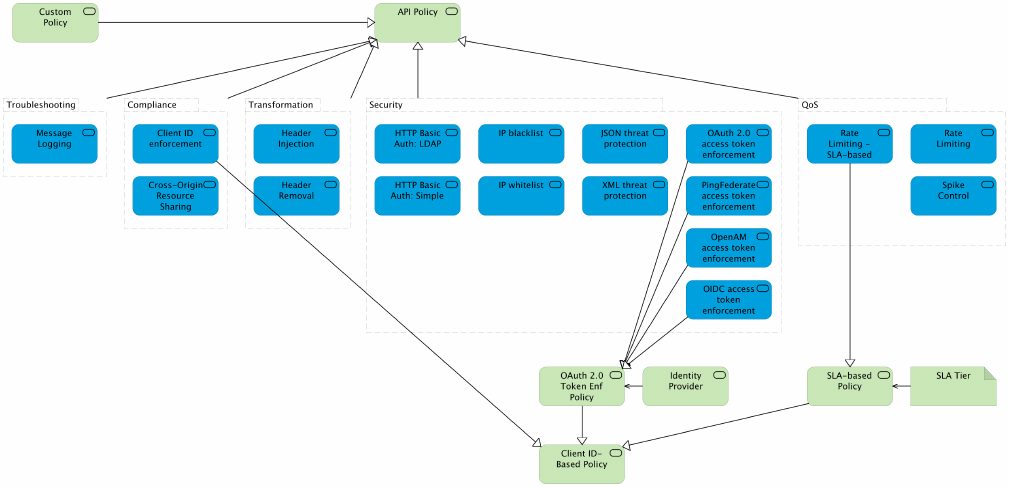

- Module 5. Enforcing NFRs on the Level of API Invocations Using Anypoint API Manager

- Module 6. Designing Effective APIs

- Module 7. Architecting and Deploying Effective API Implementations

- Module 8. Augmenting API-Led Connectivity With Elements From Event-Driven Architecture

- Module 9. Transitioning Into Production

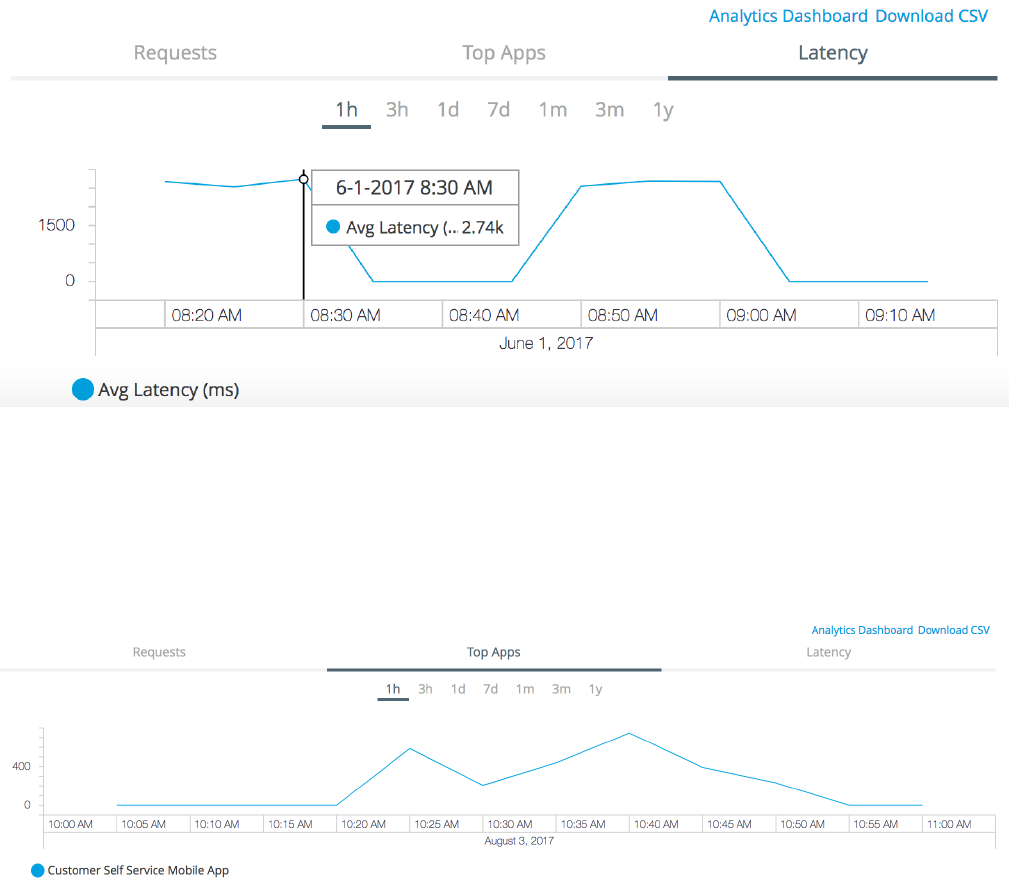

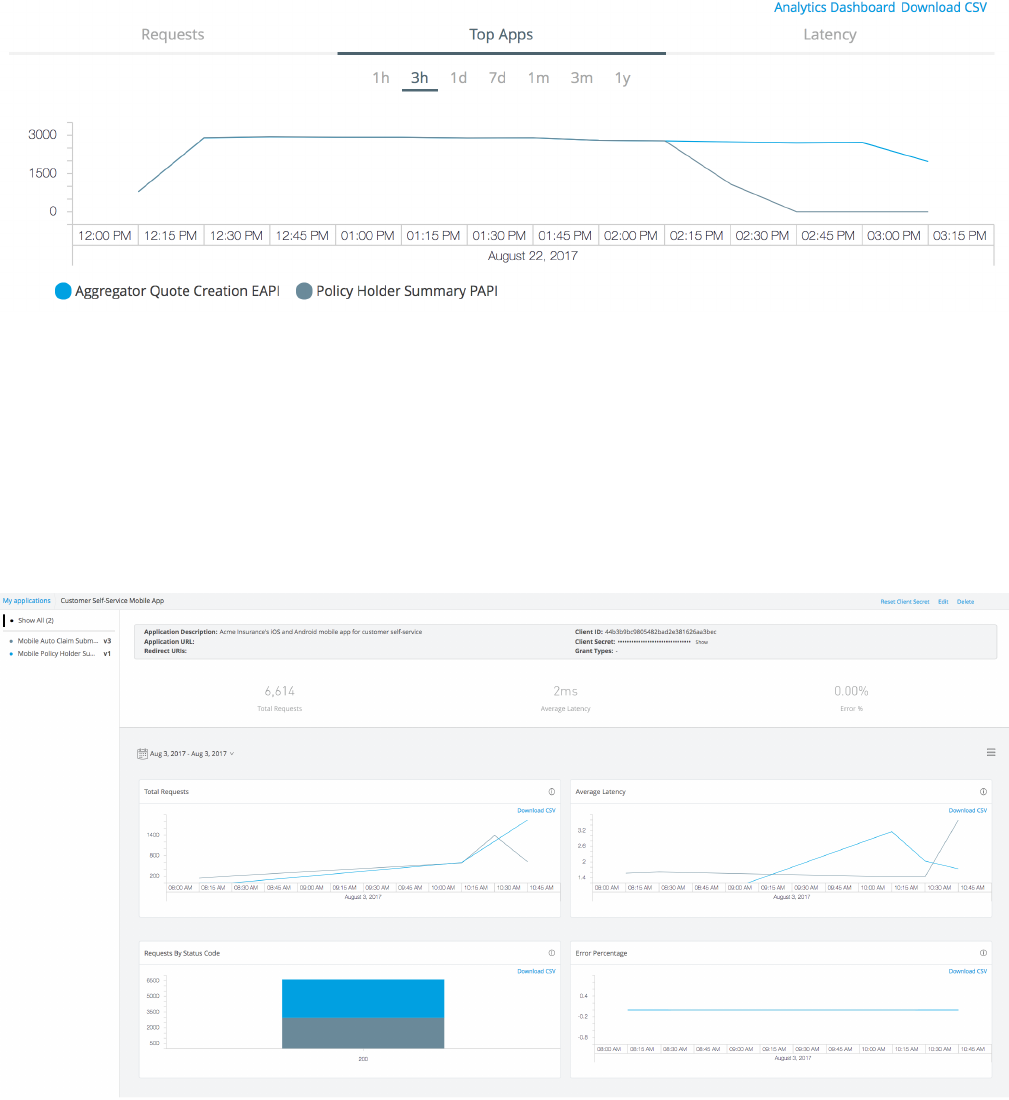

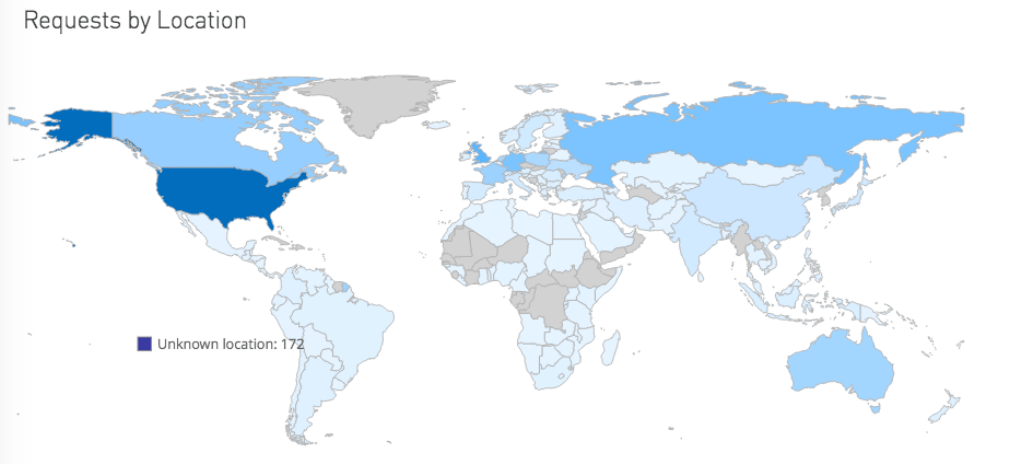

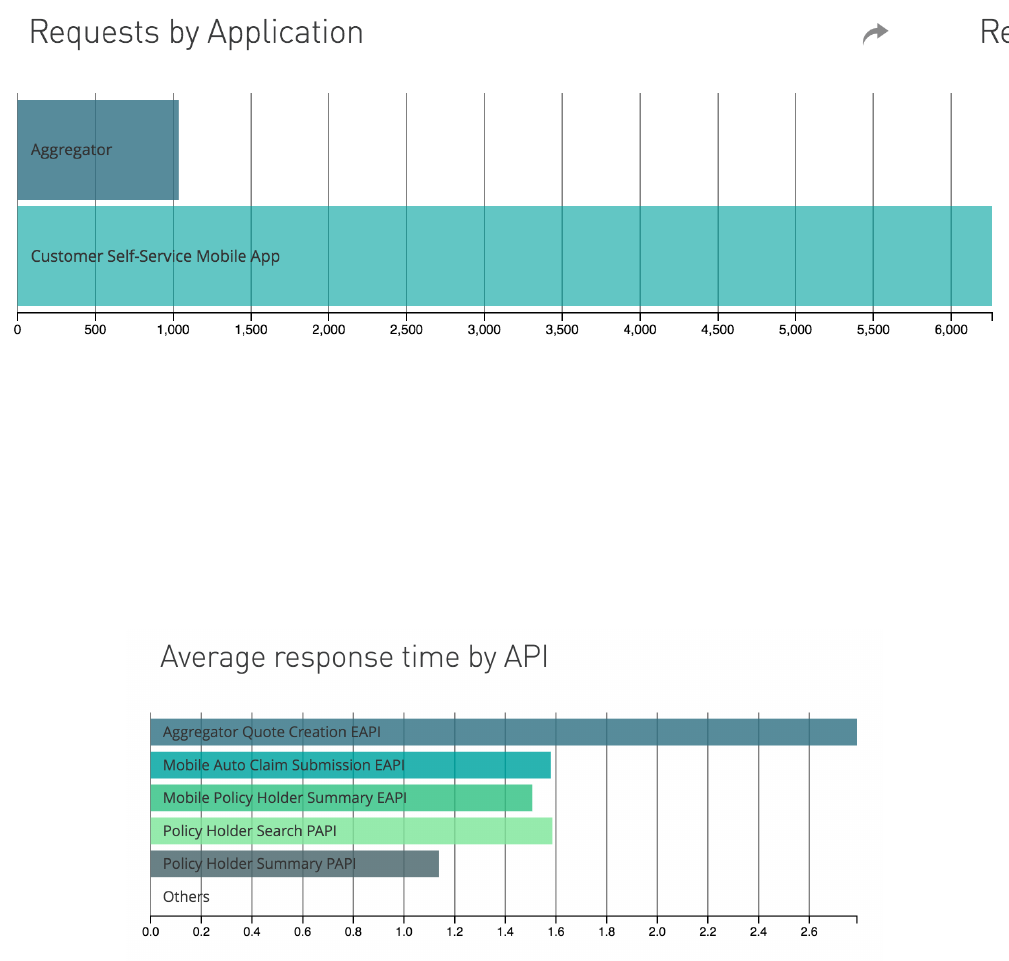

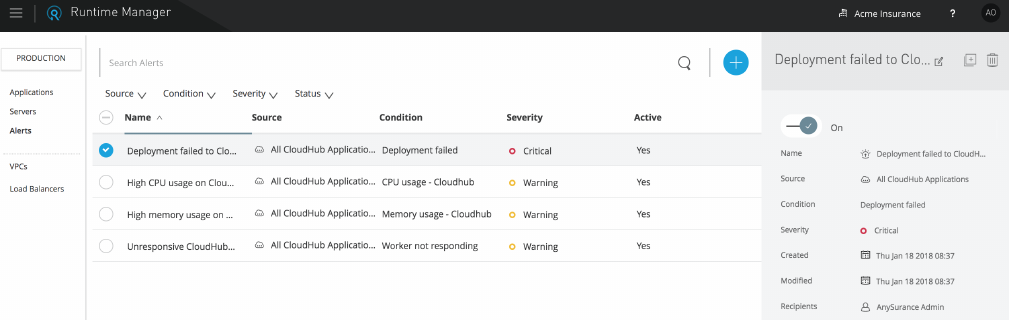

- Module 10. Monitoring and Analyzing the Behavior of the Application Network

- Wrapping-Up Anypoint Platform Architecture: Application Networks

- Appendix A: Documenting the Architecture Model

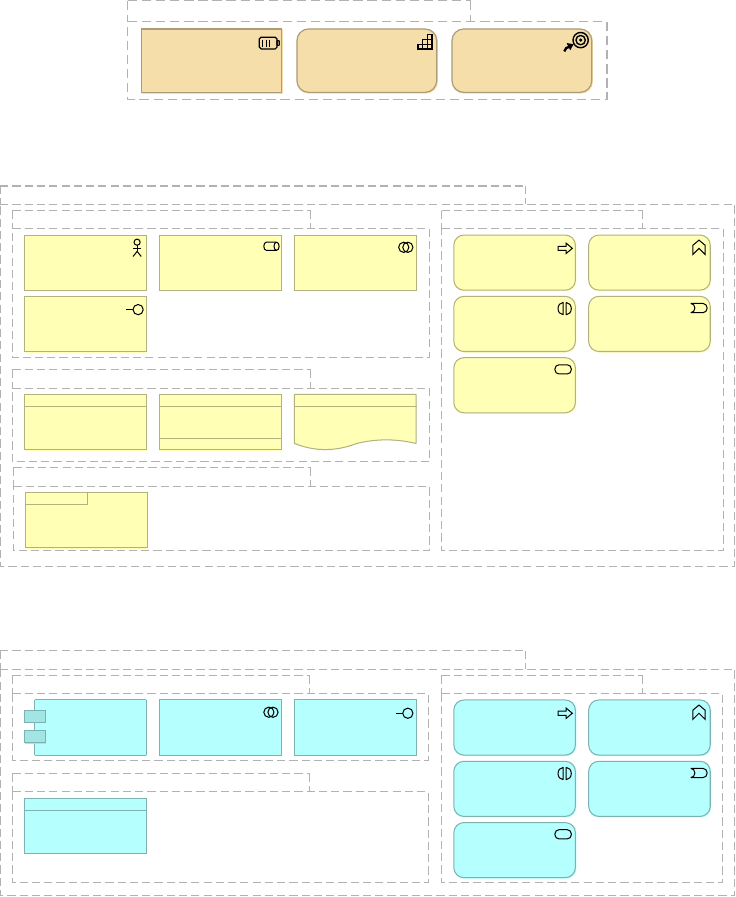

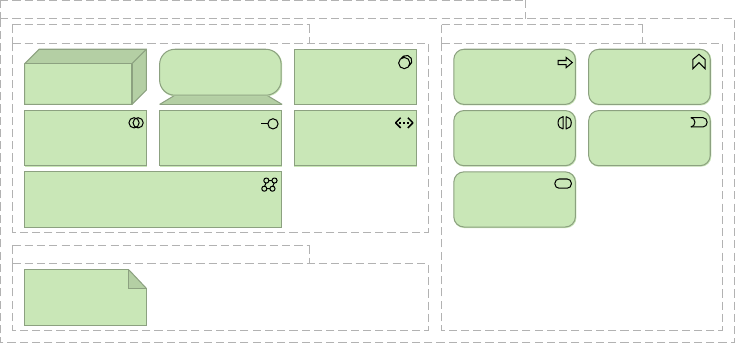

- Appendix B: ArchiMate Notation Cheat Sheets

- Glossary

- Bibliography

- Version History

Application Networks

Anypoint Platform Architecture

MuleSoft Training

2018-05-02

Table of Contents

Welcome To Anypoint Platform Architecture: Application Networks 1

1. Putting the Course in Context 7

2. Introducing MuleSoft, the Application Network Vision and Anypoint Platform 13

3. Establishing Organizational and Platform Foundations 31

4. Identifying, Reusing and Publishing APIs 52

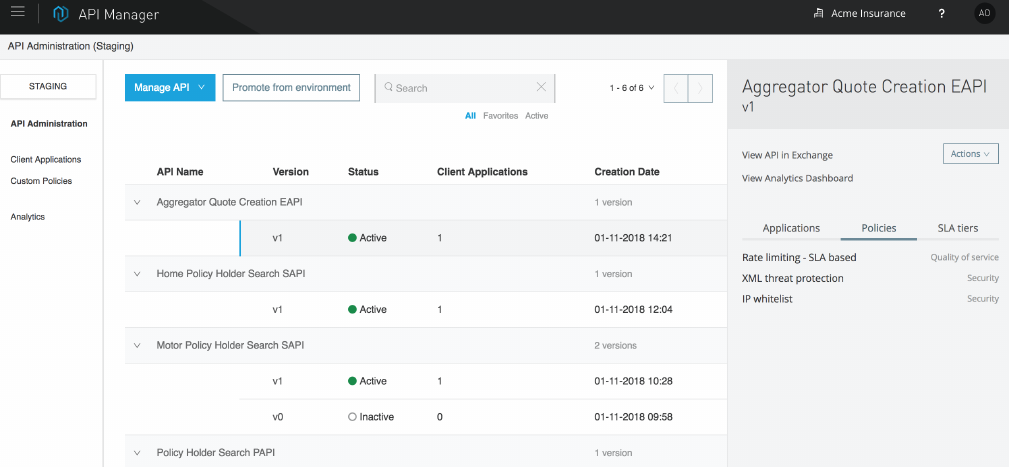

5. Enforcing NFRs on the Level of API Invocations Using Anypoint API Manager 83

6. Designing Effective APIs 112

7. Architecting and Deploying Effective API Implementations 142

8. Augmenting API-Led Connectivity With Elements From Event-Driven Architecture 174

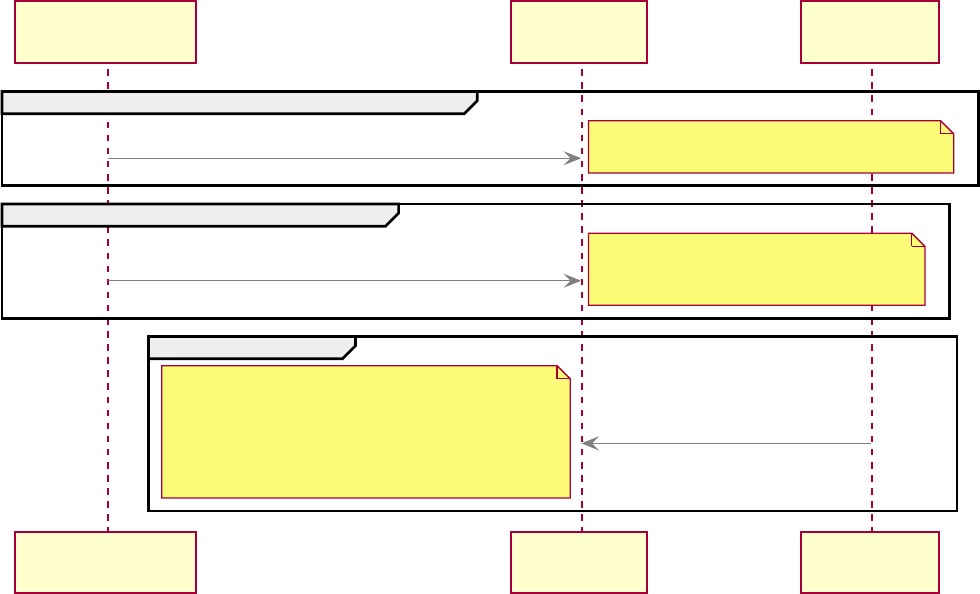

9. Transitioning Into Production 185

10. Monitoring and Analyzing the Behavior of the Application Network 202

Wrapping-Up Anypoint Platform Architecture: Application Networks 218

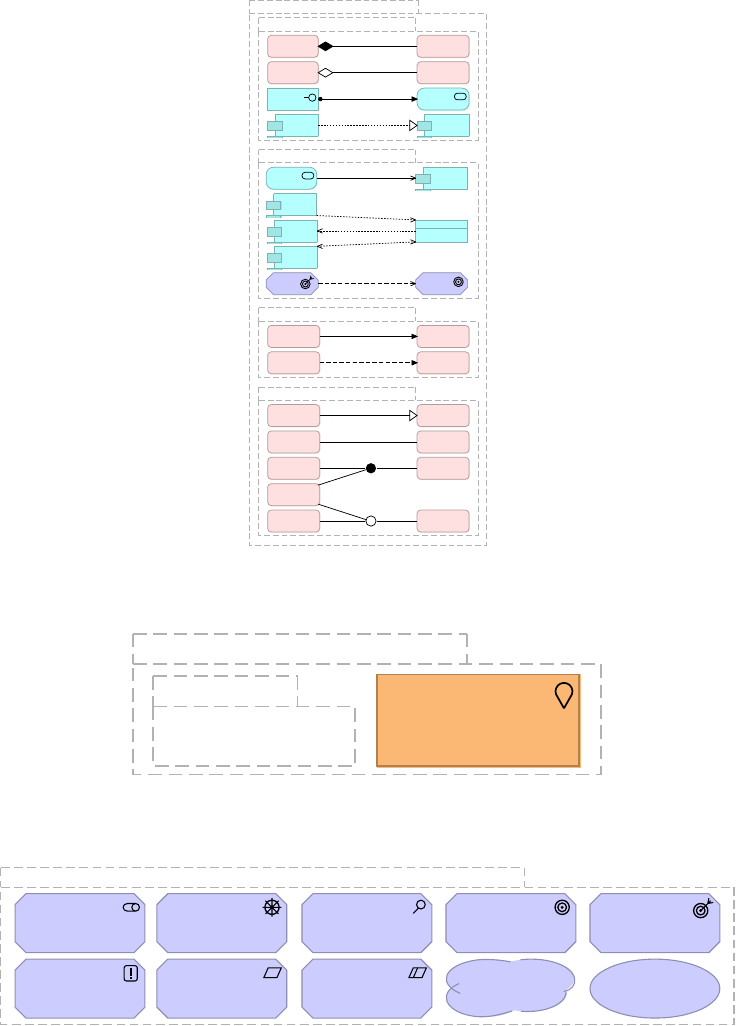

Appendix A: Documenting the Architecture Model 221

Appendix B: ArchiMate Notation Cheat Sheets 225

Glossary 228

Bibliography 240

Version History 241

Welcome To Anypoint Platform Architecture:

Application Networks

Introducing the course

Course prerequisites

The target audience of this course are architects, especially Enterprise Architects and Solution

Architects, new to Anypoint Platform, API-led connectivity and the application network

approach, but experienced in other integration approaches (e.g., SOA) and integration

technologies/platforms.

Prior to attending this course, students are required to get an overview of Anypoint Platform

and its constituent components. This can be achieved by various means, such as

•attending the short Getting Started with Anypoint Platform course

•attending the much more thorough developer-centric courses Anypoint Platform

Development: Fundamentals or MuleSoft.U Development Fundamentals

•participating in the 1-day hands-on "API-Led Connectivity Workshop" organized by MuleSoft

Presales upon request

Course goals

The overarching goal of this course is to enable students to

•direct the emergence of an effective application network out of individual integration

solutions following API-led connectivity, working with all relevant stakeholders on all levels

of the organization

•create credible high-level architecture models for integration solutions on Anypoint Platform

such that functional and non-functional requirements are likely to be met and the principles

of API-led connectivity and application networks are followed

Course outline

•Welcome To Anypoint Platform Architecture: Application Networks

•Module 1

•Module 2

•Module 3

•Module 4

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 1

•Module 5

•Module 6

•Module 7

•Module 8

•Module 9

•Module 10

•Wrapping-Up Anypoint Platform Architecture: Application Networks

How the course will work

This is a course on Enterprise Architecture with elements of Solution Architecture:

•It discusses topics on the scale of Enterprise Architecture, touching lightly on Business

Architecture, and heavily on Application Architecture and Technology Architecture.

•It motivates and builds Enterprise Architecture from strategically important integration

solutions and therefore elaborates on parts of their high-level Solution Architecture.

•It stays away from architecturally insignificant design and implementation discussions:

◦As a rule, these are all topics whose repercussions are confined to individual application

components and are therefore not apparent from outside these application components.

◦When a decision affects the large-scale properties of the application network, however, it

becomes architecturally significant. This is the reason why the course contains a fairly

detailed discussion on strategies for invoking APIs in a fault-tolerant way (7.2).

•No code is shown, neither implementation code nor code for API specifications such as

RAML definitions. However, the topic of API specifications and the features offered by RAML

in this space are touched upon in several places, because they are important for the

functioning of an application network.

This course is primarily driven by a single case study, Acme Insurance, and two imminent

strategically important change initiatives that need to be addressed by Acme Insurance. These

change initiatives provide the background and motivation for most discussions in this course.

As various aspects of the case study are addressed, the discussion naturally elaborates on the

central topic of the course, i.e., how to architect and design application networks using API-led

connectivity and Anypoint Platform.

However, the course cannot jump into architecting without any prior knowledge about

Anypoint Platform, what terms like "API-led connectivity" and "application network" actually

mean, and how MuleSoft and MuleSoft customers typically approach integration challenges like

those faced by Acme Insurance. Therefore, Module 1 and Module 2 provide this context-setting

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 2

and introduction. Acme Insurance itself is briefly introduced already in Introducing Acme

Insurance and becomes the focus of the discussion from Module 3 onwards.

As the architectural and design discussions in this course unfold, it is inevitable that opinions

are expressed, solutions presented and decisions made that are somewhat ambiguous, without

a clear-cut distinction between correct or false: such is the nature of architecture and design.

A good example of this is the discussion on Bounded Context Data Model versus Enterprise

Data Model in section 6.3. Students are of course encouraged to challenge the decisions made,

and to decide differently in similar real-world situations. The crucial point is that the thought

processes behind these architectural and design decisions are elaborated-on in the course,

which creates awareness of the topic and increases understanding of the tradeoffs involved in

making a decision.

Exercises, typically in the form of group discussions, are an important element of this course.

But these exercises are never in the form of actually doing something, on the computer, with

Anypoint Platform or any of its components. Instead, they are simply discussions that invite to

reflect, with the intention of validating and deepening the understanding of a topic addressed

in the course.

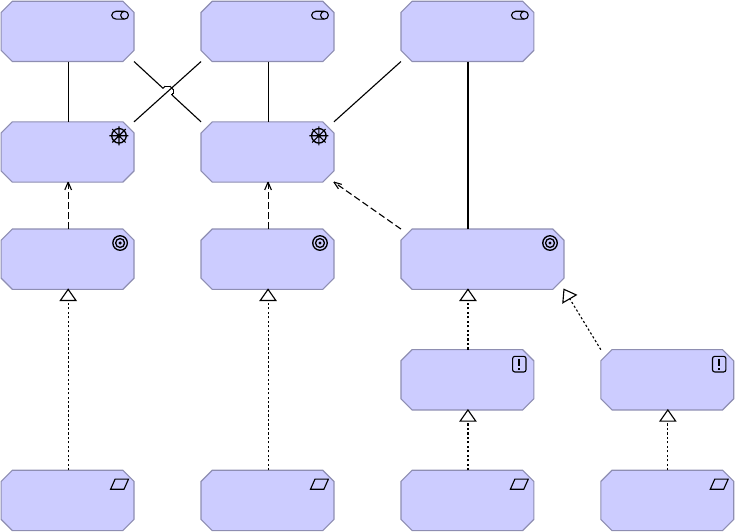

All architecture diagrams use ArchiMate 3 notation. A summary of that notation can be found

in Appendix B.

Course materials and certification

Students receive the Course Manual (this document), a PDF of more than 200 pages, which

contains all material presented on slides plus additional discussions and explanations.

The course manual is somewhere between a bound edition of the slides and a standalone

book: it contains all slide content and enough context around this content to be much easier to

consume than the slides alone would be. On the other hand, the course manual lacks some of

the explanations and elaborations that a full-fledged book would be expected to contain: this

additional depth is provided by the instructor when teaching the course!

MuleSoft offers a certification based on this course. For students fulfilling the course’s

prerequisites, attending class and studying the Course Manual should be sufficient for passing

the exam.

Introducing Acme Insurance

The Acme Insurance organization

Acme Insurance is a well-established, medium-sized, regional insurance provider. They have

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 3

two lines of business (LoBs): personal motor insurance and home insurance.

Acme Insurance has recently been acquired by an international competitor: The New Owners.

As one consequence, Acme Insurance is currently being rebranded as a subsidiary of The New

Owners. As another important consequence, Acme Insurance’s strategy is increasingly being

defined by The New Owners.

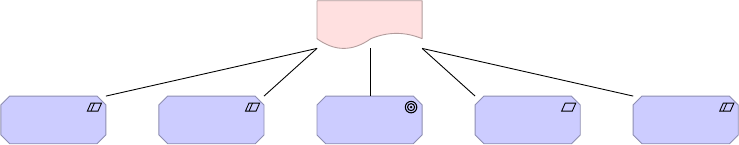

The New

Owners

Acme

Insurance HQ

Corporate HQ

Other

Acquisitions'

HQs

Motor

Underwriting

Home

Underwriting

Home Claims

Acme IT

Corporate IT

Personal

Motor LoB

Home LoB

Motor Claims

Personal

Motor LoB IT

Home LoB IT

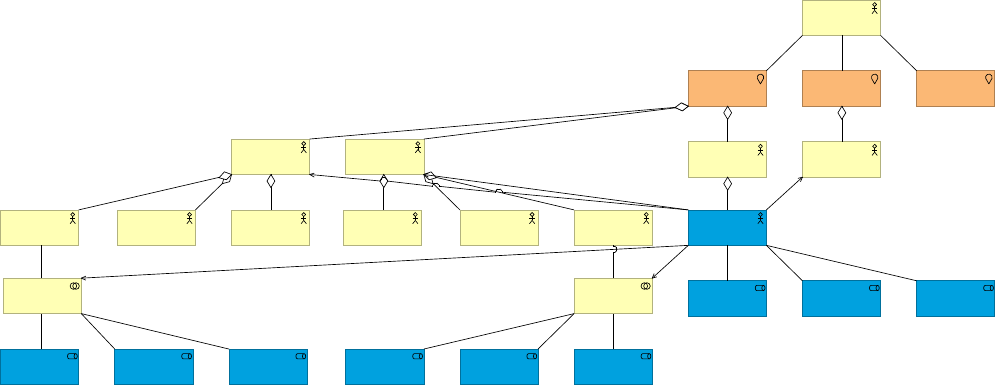

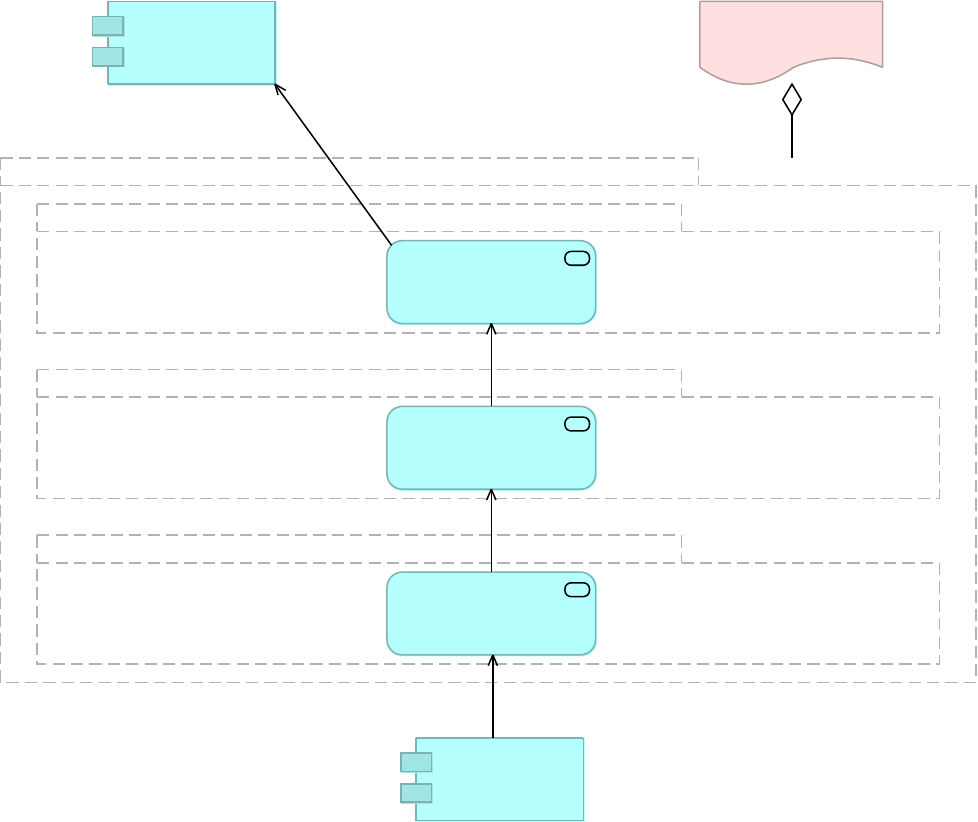

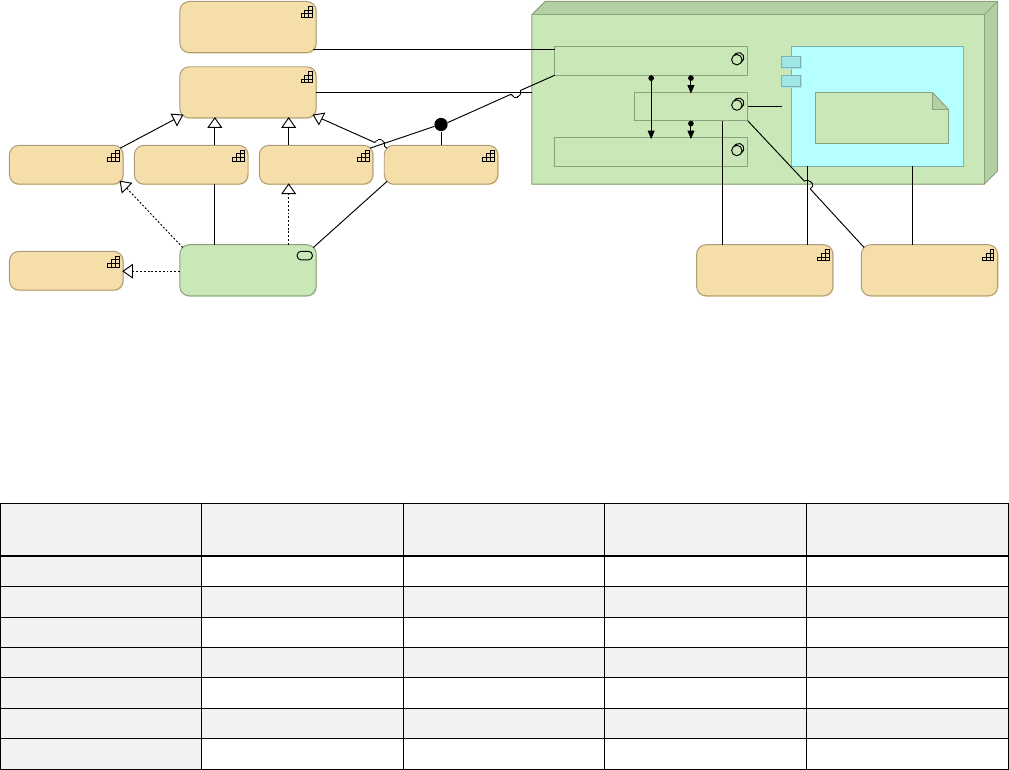

Figure 1. Relevant elements of the organizational structure of Acme Insurance.

A glimpse into Acme Insurance's baseline Technology

Architecture

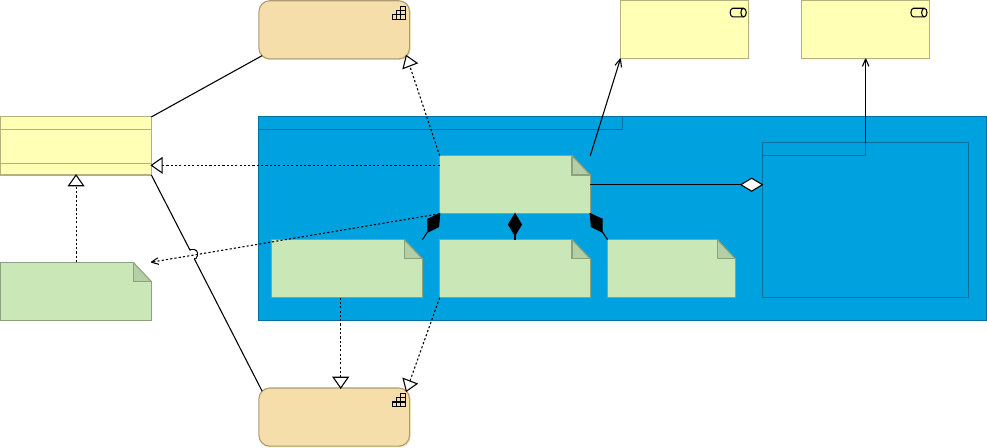

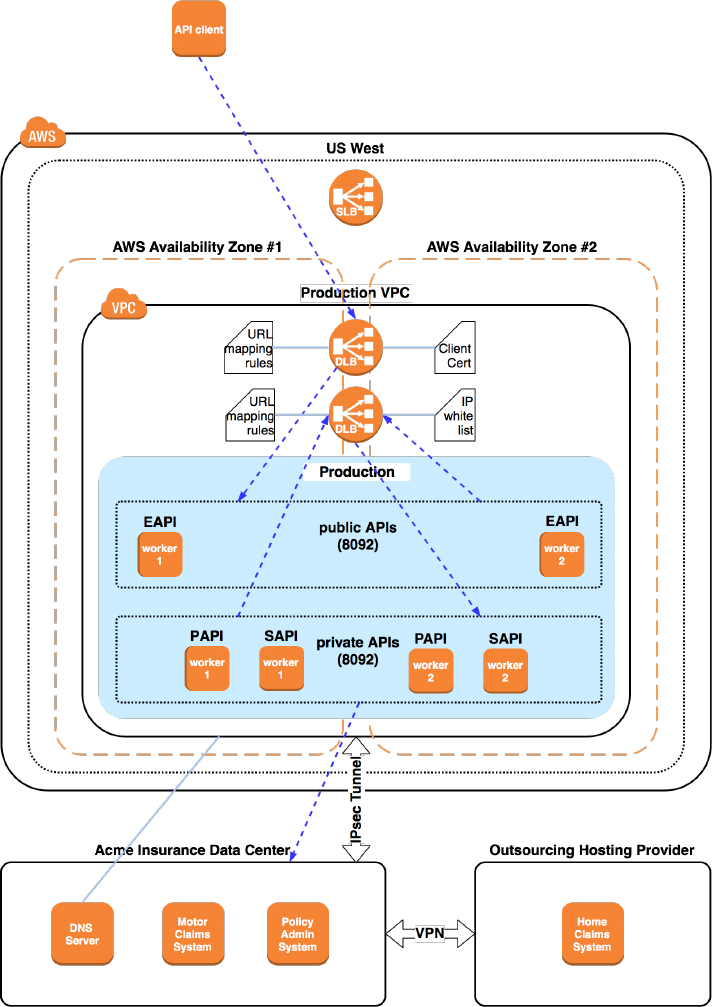

The baseline Technology Architecture of Acme Insurance can, at a very high level, be

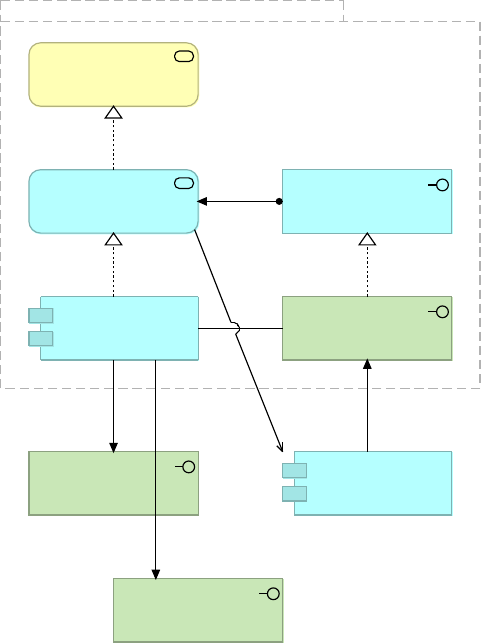

characterized as follows (Figure 2):

•Acme Insurance operates an IBM-centric data center with the Acme Insurance Mainframe

and clusters of AIX machines

•The Policy Admin System runs on the Mainframe and is used by both Motor and Home

Underwriting. However, support for Motor and Home policies was added to the Policy Admin

System in different phases and so uses different data schemata and database tables

•The Motor Claims System is operated in-house on a WebSphere application server cluster

deployed on AIX

•The Home Claims System is a different system, operated by an external hosting provider

and accessed over the web

•Both claims systems are accessed by Acme Insurance’s own Claims Adjudication team from

their workstations

•Simple claims administration is handled by an outsourced call center, also via the Motor

Claims System and Home Claims System

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 4

Acme Insurance Data Center

Mainframe

Policy Admin

System

Rating Engine

WebSphere AIX Cluster

Motor Claims

System

Outsourcing Hosting Provider

Unspecified Infrastructure

Home Claims

System

VPN

Backoffice

Motor

Underwriting

Workstations

Home

Underwriting

Workstations

Claims

Adjudication

Workstations

Outsourced Call Center

Motor Claims

Admin

Workstations

Home Claims

Admin

Workstations

Figure 2. A small sub-set of the baseline Technology Architecture of Acme Insurance.

Beware of the two completely distinct meanings of the term "policy" in this

course: insurance policy on the one hand and API policy on the other hand. To

avoid confusion, the latter will always be referred to using the complete term

"API policy".

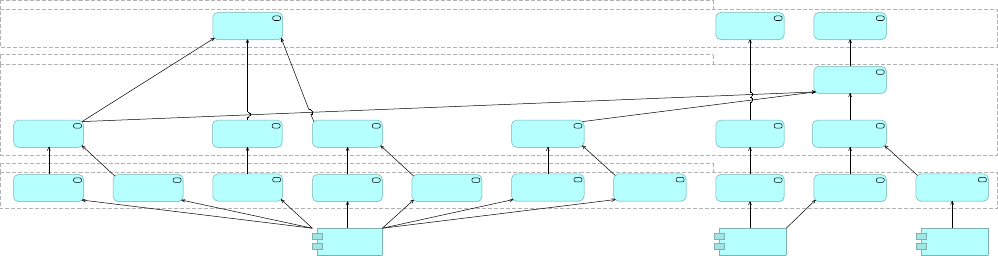

Acme Insurance's motivation to change

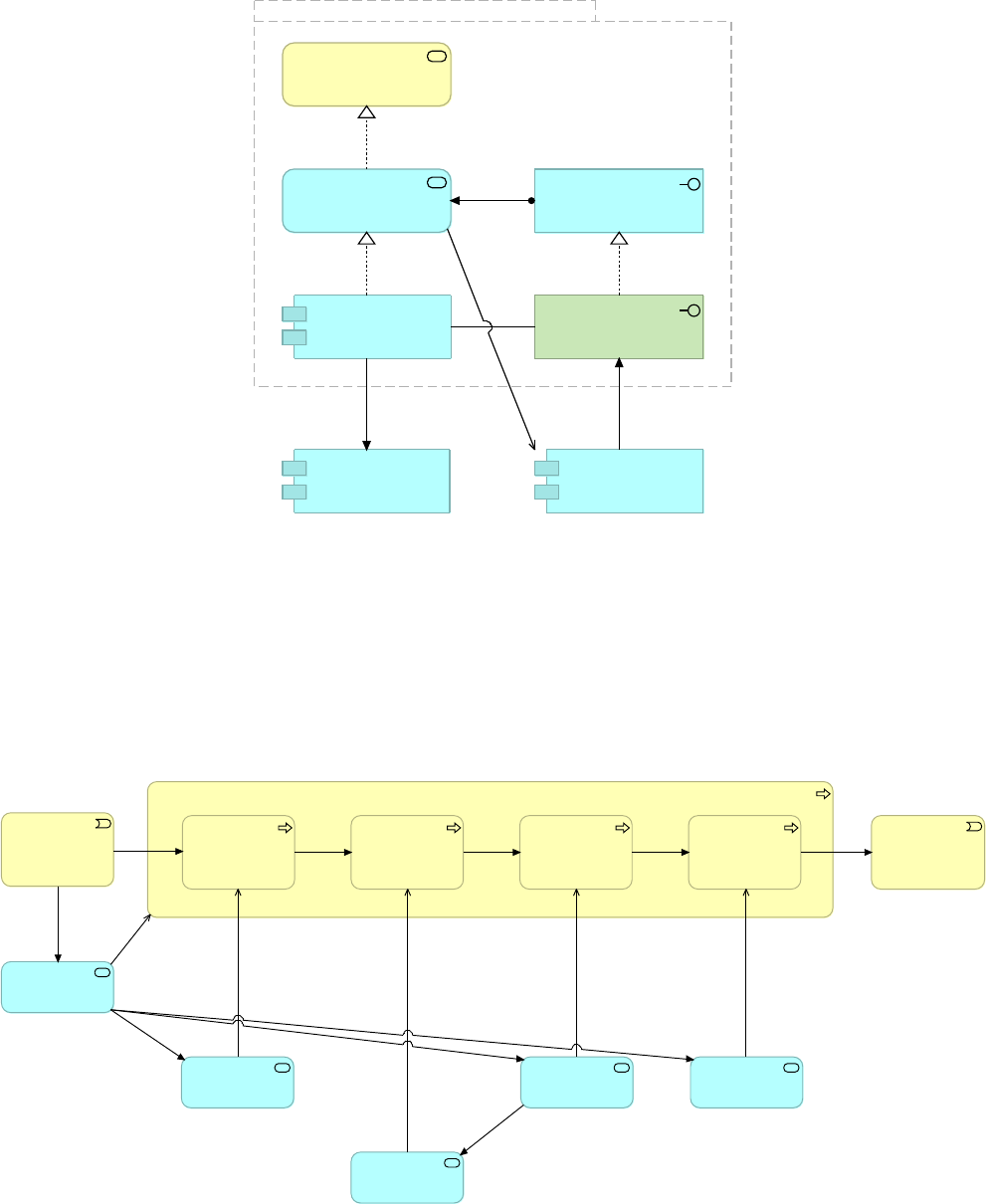

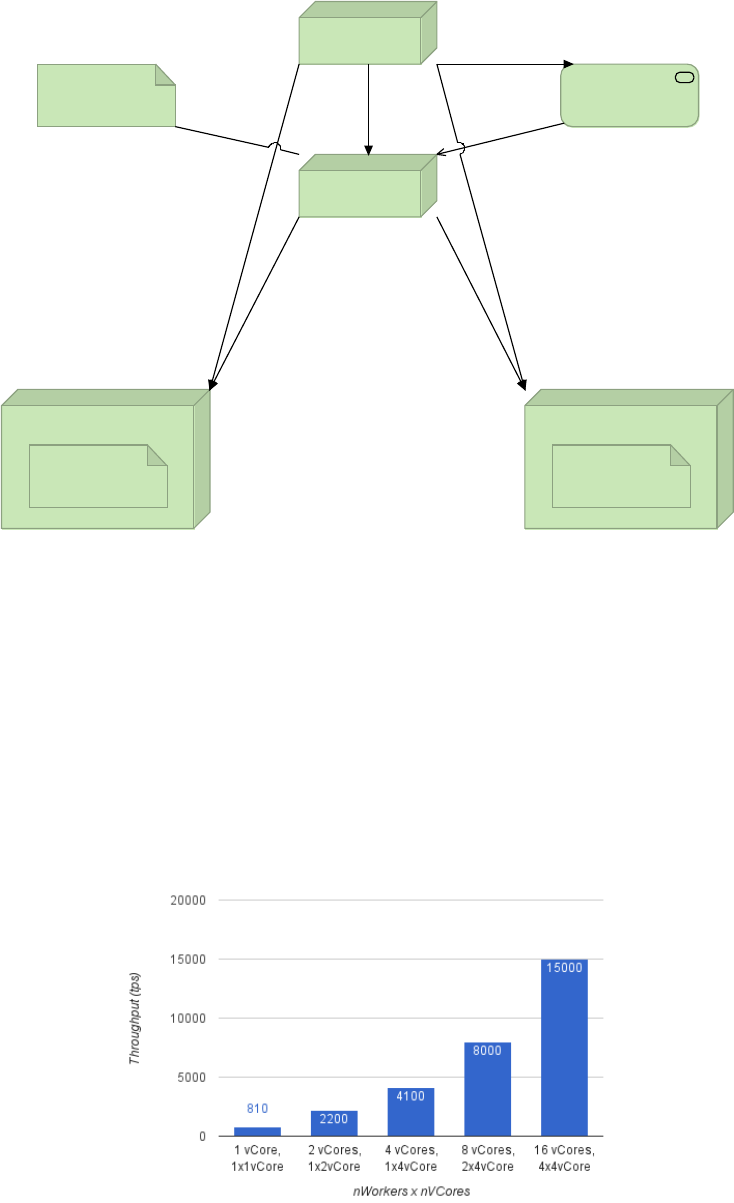

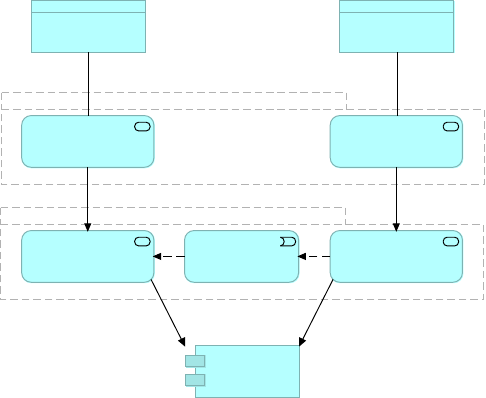

Under pressure from The New Owners, Acme Insurance executives embark on two immediate

strategic initiatives (Figure 3):

•Open-up to external price comparison services ("Aggregators") for motor insurance: This

contributes to the goal of establishing new sales channels, which in turn (positively)

influences the driver of increasing revenue, which is important to all management

stakeholders

•Provide (minimal) self-service capabilities to customers: This contributes to the goal of

increasing the prevalence of customer self-service, which in turn (positively) influences the

driver of reducing cost, which is important to all management stakeholders as well as to

Corporate IT

Not immediately relevant, but clearly on the horizon, are the following far-reaching changes:

•Replace the two bespoke claims handling systems, the Motor Claims System and Home

Claims System, with one COTS product: This contributes to the principle of preferring COTS

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 5

solutions, which in turn contributes to Corporate IT’s goal of standardizing IT systems

across all subsidiaries of The New Owners

•Replace the legacy policy rating engine with a corporate standard: This contributes to the

principle of reusing custom software (such as the corporate standard policy rating engine)

where possible, which in turn contributes to Corporate IT’s goal of standardizing IT systems

The New

Owners

New Sales

Channels

Revenue

Increase

Cost

Reduction

Customer

Self-Service

Acme

Insurance

Execs

Corporate IT

IT System

Standardization

Prefer COTS

Reuse

Custom

Software

Provide Self-

Service

Capabilities

Open to

Aggregators

Replace

Rating

Engine

Replace

Claims

Systems

Figure 3. Acme Insurance's immediate and near-term strategic change initiatives, their

rationale and stakeholders.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 6

Module 1. Putting the Course in Context

Objectives

•Define Outcome-Based Delivery (OBD)

•Describe how this course is aligned to parts of OBD

•Use essential course terminology correctly

•Recognize the ArchiMate 3 notation subset used in this course

1.1. Understanding the course organization

1.1.1. Introducing Outcome-Based Delivery - OBD

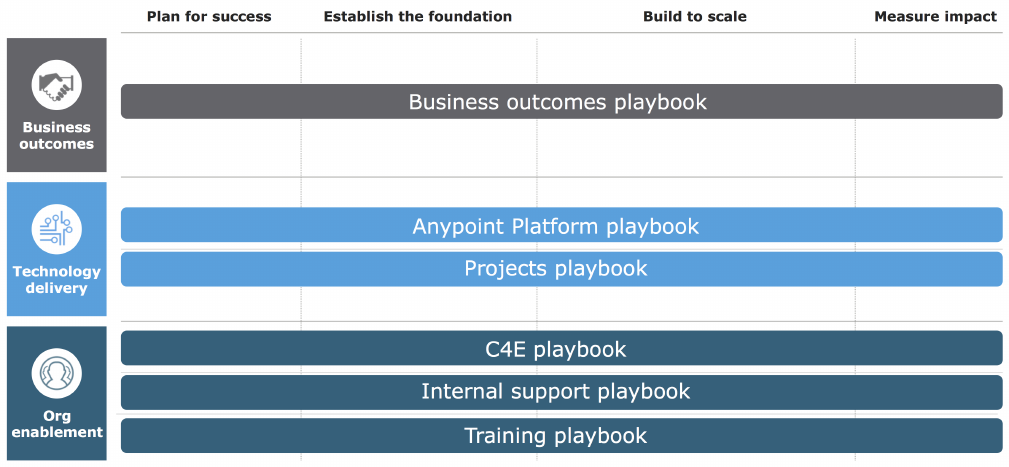

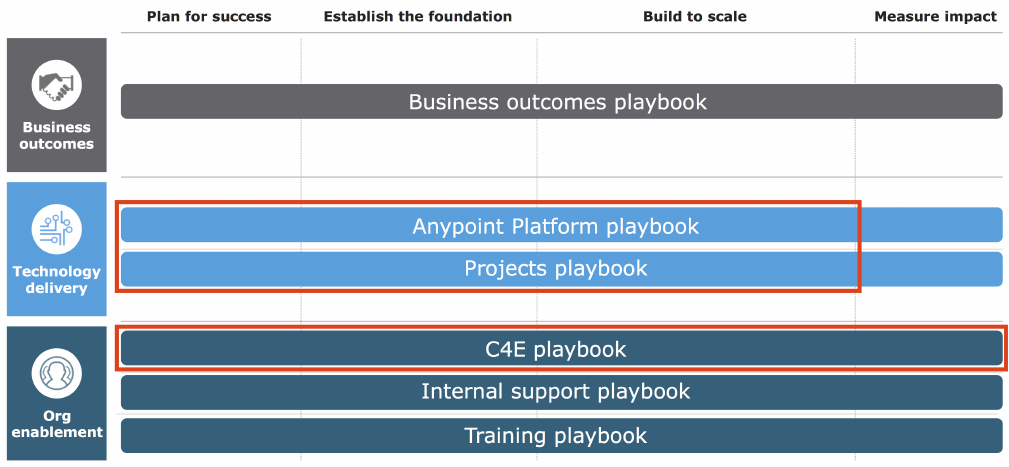

OBD is a framework and methodology for enterprise integration delivery proposed by

MuleSoft. It takes a holistic view of delivering integration capabilities, addressing

•Business outcomes, incl. alignment and governance of integration capability delivery

•Technology delivery, separating

◦cross-project platform concerns

◦from the delivery of projects

•Organizational enablement, specifically

◦the establishment of a Center for Enablement in the organization

◦the professional IT support for Anypoint Platform with the help of the MuleSoft support

organization

◦training of all staff involved in delivering integration capabilities

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 7

Figure 4. OBD holistically addresses all aspects of integration capability delivery into an

organization.

1.1.2. Course content in the context of OBD

OBD is materialized by "playbooks", where each playbook addresses one of the dimensions of

OBD and identifies activites along that dimension. This course is aligned with the principles of

OBD and the OBD playbooks, but it does not follow the exact naming or sequential order of

the playbooks' activities.

Being an architecture course, it focuses on these dimensions of OBD:

•Technology delivery, both from an Anypoint Platform and projects perspective

•Organizational enablement through the C4E

However:

•Iteration is at the heart of OBD, but this course does not iterate

◦Every topic is discussed once, in the light of different aspects of the case study, which

would in the real world be addressed in different iterations

•OBD stresses planning, but this course does not simulate planning activities or present

plans

•Discussion of organizational enablement and the C4E is light and mainly introduces the

concept and a few ideas on how to measure the C4E’s impact with KPIs

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 8

Figure 5. This course focuses on architectural aspects of technology delivery and introduces

the C4E.

1.2. Understanding essential course terminology

1.2.1. Defining API

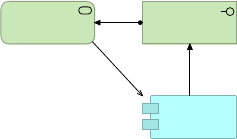

See the corresponding glossary entry, cf. Figure 6.

1.2.2. Defining API client

See the corresponding glossary entry.

1.2.3. Defining API consumer

See the corresponding glossary entry.

1.2.4. Defining API implementation

See the corresponding glossary entry.

1.2.5. Defining API-led connectivity

See the corresponding glossary entry.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 9

1.2.6. Simplified notion of API

In colloquial usage of the term API, departing from the precise - and somewhat confining -

definition given in 1.2.1, API often refers not just to the application interface but to the

combination of

•a programmatic application interface

◦i.e., the precise meaning of API

•the application service to which this application interface provides access

•the business service realized by that application service

•the HTTP-based technology interface realizing the application interface in concrete technical

terms

•and, importantly, the application component implementing the functionality exposed by the

application interface

◦i.e., the API implementation

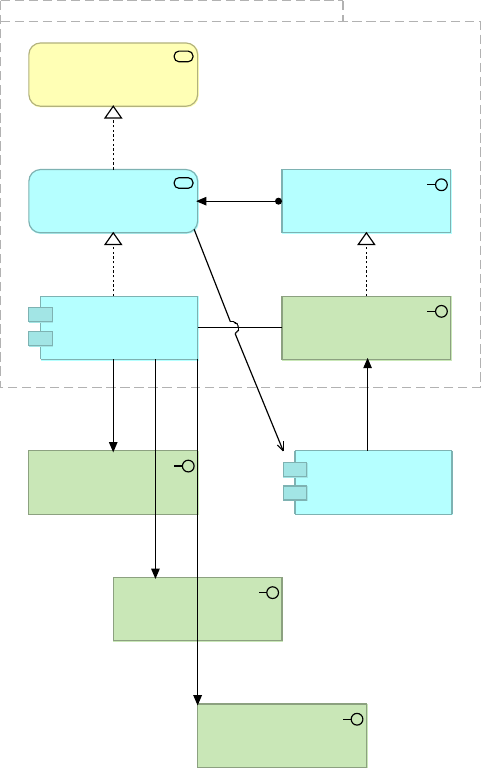

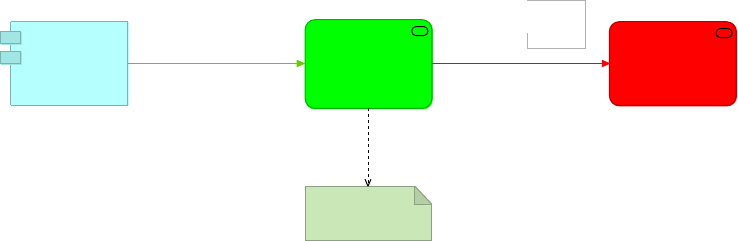

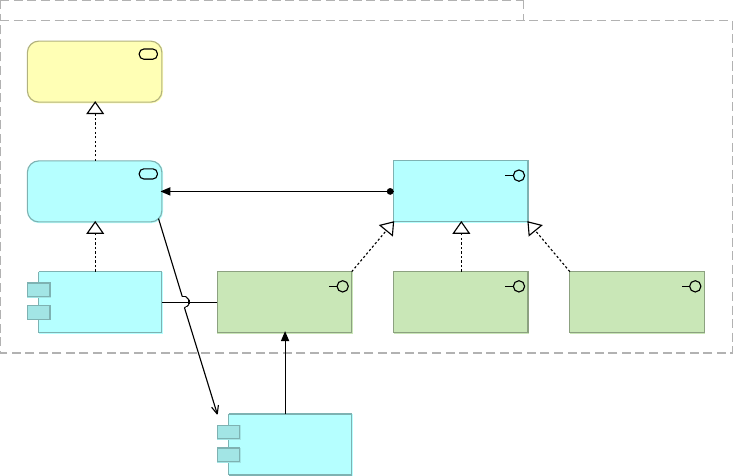

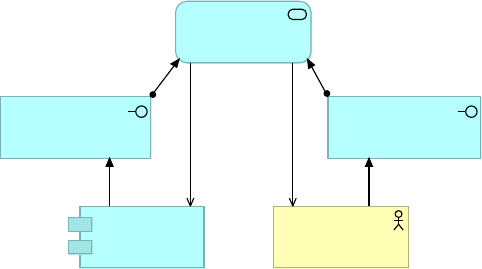

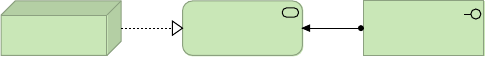

•See Figure 6, cf. Figure 138

API Client

Simplified

notion of API

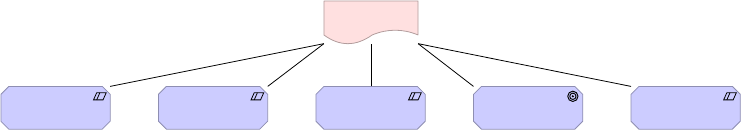

Figure 6. The simplified notion of API merges the aspects of application interface, technology

interface, API implementation, application service and business service and is here

represented visually as an application service element with the name of the API. An API in this

simplified sense directly serves an API client and is invoked (triggered) by that API client.

This simplified notion of API is justified because in a very significant number of cases there is

exactly one instance of each of these elements per API. Indeed, striving for a 1-to-1

relationship between API as application interface and API implementation in particular is

usually advisable as it helps combat the complexity of large application networks. This is also

the approach followed in this course.

Using this simplified notion of API:

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 10

•Experience APIs are shown invoking Process APIs and Process APIs are shown invoking

System APIs, although, in reality, it is only the API implementation of the Experience API

that depends on the technology interface of the Process API and, at runtime, through that

interface, invokes the API implementation of the Process API; and it is only the API

implementation of the Process API that depends on the technology interface of the System

API and, at runtime, through that interface, invokes the API implementation of the System

API.

•It is possible to say that an “API is deployed to a runtime” when in reality it is only the API

implementation (the application component) that is deployable.

In other contexts (not in this course), the terms "service" or "microservice" are used for the

same purpose as the simplified notion of API.

When the simplified notion of API is dominant then the pleonasm "API interface" is sometimes

used to specifically address the interface-aspect of the API in contrast to its implementation-

aspect.

For instance:

•If the Auto policy rating API were just exposed over one HTTP-based interface, e.g., a

JSON/REST interface, then the simplified notion of this API would comprise:

◦Technology interface: Auto policy rating JSON/REST programmatic interface

◦Application interface: Auto policy rating

◦Business service: Auto policy rating

◦The application component (API implementation) implementing the exposed functionality

•However, since the Auto policy rating API (in the strict sense of application interface) is also

realized by a second technology interface, the Auto policy rating SOAP programmatic

interface, it is not clear whether the simplified notion of API comprises both technology

interfaces or not. It is therefore preferred, in complex cases such as this, to use the term

API only in its precise sense, i.e., as a special kind of application interface as defined in

1.2.1.

1.2.7. Defining application network

See the corresponding glossary entry.

Summary

•Outcome-based Delivery (OBD) is a holistic framework and methodology for enterprise

integration delivery proposed by MuleSoft, addressing

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 11

◦Business outcomes

◦Technology delivery in the form of platform delivery and delivery of projects

◦Organizational enablement through the Center for Enablement (C4E), support for

Anypoint Platform and training

•This course is aligned with the technology delivery and C4E dimensions of OBD

•An API is an application interface, typically with a business purpose, to programmatic API

clients using HTTP-based protocols

•Sometimes the term "API" also denotes the API implementation, i.e., the underlying

application component that implements the API’s functionality

•API-led connectivity is a style of integration architecture that prioritizes APIs and assigns

them to three tiers

•Application network is the state of an Enterprise Architecture that emerges from the

application of API-led connectivity and fosters governance, discoverability, consumability

and reuse

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 12

Module 2. Introducing MuleSoft, the Application

Network Vision and Anypoint Platform

Objectives

•Articulate MuleSoft’s mission

•Explain MuleSoft’s proposal for closing the increasing IT delivery gap

•Describe the capabilities and high-level components of Anypoint Platform

2.1. Introducing MuleSoft

2.1.1. MuleSoft's mission

MuleSoft has been on a mission since 2003 to connect the world’s applications, data and

devices. MuleSoft started solving the classic on-premises backend integration problems with

an open source, light-weight ESB: Mule ESB. Since then, MuleSoft has grown to provide a

comprehensive platform to help companies with today’s business challenges.

MuleSoft mission statement:

To connect the world’s applications, data and devices to transform business

MuleSoft’s mission is to enable companies to transform and compete in a vastly changing

digital world.

2.1.2. The story behind the name

Frustrated by integration "donkey work", Ross Mason, VP Product Strategy, founded the open

source Mule project in 2003. He created a new platform that emphasized ease of development

with quick and efficient assembly of components, instead of custom-coding by hand.

2.1.3. MuleSoft customers

•More than 175,000 developers worldwide

•More than 1100 customers

•3 of the top 10 global auto companies

•5 of the top 10 global banks

•2 of the top 5 global retailers

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 13

2.1.4. Company overview

•Company

◦Founded in 2006, HQ: San Francisco

◦22 offices world-wide

◦1300+ employees worldwide

◦Nearly 70% new subscription bookings driven by APIs, mobile and SaaS integration

•Products

◦MuleSoft’s Anypoint Platform addresses on-premises, cloud and hybrid integration use

cases with scale and ease-of-use

◦Subscription model with various packaged options to serve different use cases

2.2. Introducing the application network vision

This module aims to give a simplified, easily understandable introduction to

concepts that will be developed and applied in much more detail and depth in

the remainder of this course - API-led connectivity and application networks.

As such, this section contains a somewhat superficial comparison to SOA, does

not make use of the Acme Insurance case study and intentionally glosses over

more subtle but important aspects - which will be discussed in later course

modules.

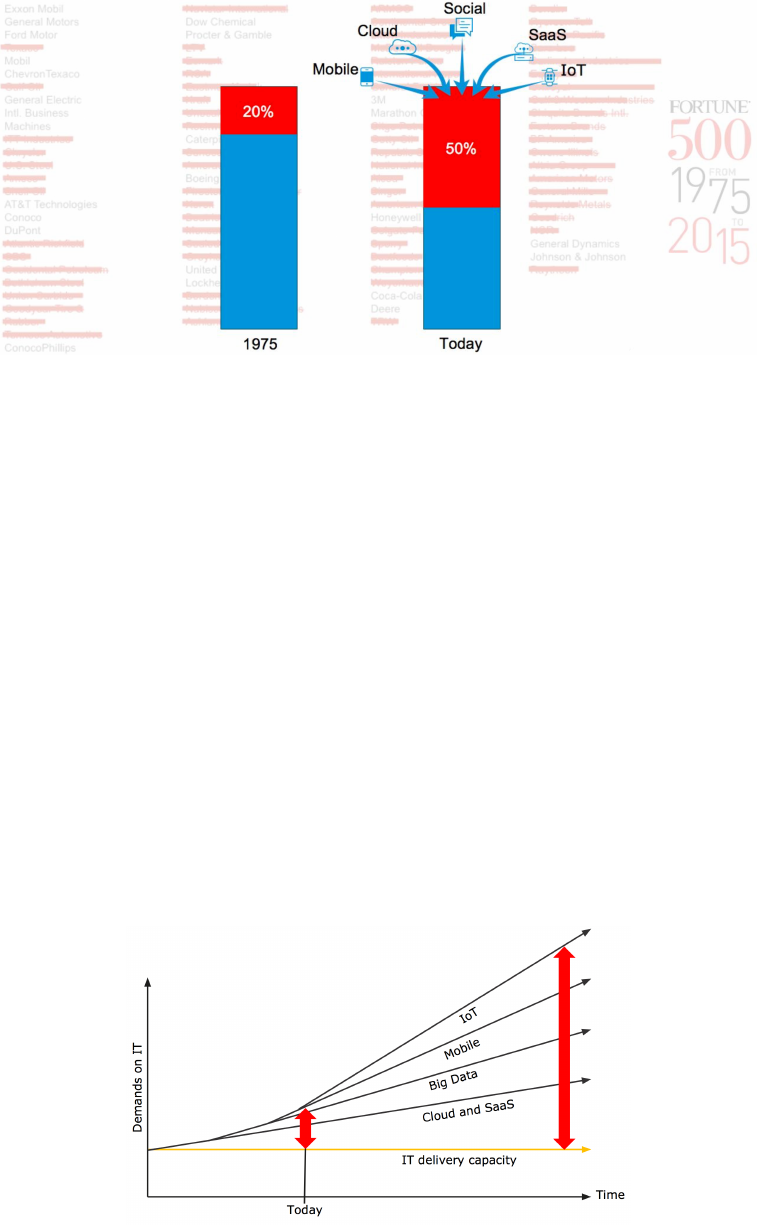

2.2.1. 10-year turnover in Fortune 500 companies

There is a convergence of forces – mobile, SaaS, Cloud, Big Data, IoT and Social - which is

causing a major need for a change in speed to remain competitive and/or lead the market.

It used to be that 80% of companies on the Fortune 500 would still be there after a decade.

Today, with these forces, enterprises have no better than a 1-in-2 chance of remaining in the

Fortune 500.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 14

Figure 7. Fortune 500 10-year turnover.

To succeed, companies need to be driving a very different clock speed and embrace change;

change has become a constant. Successful companies are leveraging the aforementioned

forces to be competitive and in some cases dominate their markets.

Business is pushing to move at much faster speeds than IT and technology are able to.

Technology and IT are holding back business rather than enabling it.

MuleSoft is helping 1100+ enterprise customers undertake transformative changes, and so

have a unique perspective on the market. MuleSoft’s customers' CIOs say that they have to

achieve 3-5 times higher speed as a business within the next 2-3 years to compete.

2.2.2. Digital pressures create a widening IT delivery gap

While IT delivery capacity remains nearly constant, the compound effect of the

aforementioned forces (mobile, SaaS, Cloud, Big Data, etc.) leads to ever-increasing demands

on IT. The result is a widening IT delivery gap.

Figure 8. Illustration of the widening IT delivery gap caused by various forces.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 15

2.2.3. Current responses are insufficient

Current responses to that widening IT delivery gap are not sufficient:

•Working harder is not sustainable

•Over-outsourcing exacerbates the situation

•Agile and DevOps are important and helpful but not sufficient

2.2.4. Learning from other industries

Companies such as

•McDonald’s

•Subway

•Marriot

•Amazon

have shown the value of these approaches:

•Create scale through reuse

•Enable self-service

•Encourage innovation "at the edge"

•Promote quality

•Retain visibility and control

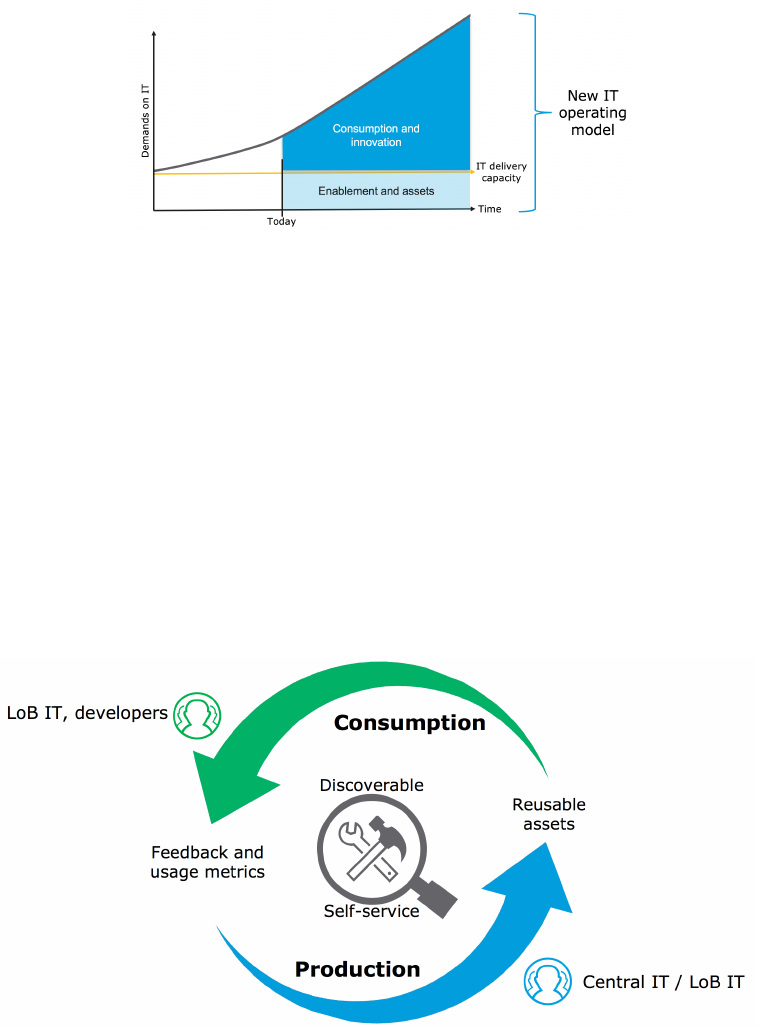

2.2.5. Closing the delivery gap with constant IT delivery

capacity

MuleSoft proposes an IT operating model that takes these successful approaches from other

industries on board:

•Even with constant IT delivery capacity, IT can empower "the edge" - i.e., LoB IT and

developers - by creating assets and helping to create assets they require.

•Consumption of those assets and the innovation enabled by those assets can then occur

outside of IT, at the edge, and therefore grow at a considerably faster rate than IT delivery

capacity itself.

•In this way, the ever-increasing demands on IT can be met even though IT delivery

capacity stays approximately constant.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 16

Figure 9. How MuleSoft's proposal for an IT operating model that distinguishes between

enablement and asset production on the one hand, and consumption of those assets and

innovation on the other hand, allows the increasing demands on IT to be met at constant IT

delivery capacity.

2.2.6. An operating model that emphasizes consumption

IT is now the steward of this operating model with a virtuous cycle in which IT produces

reusable assets and enables consumption of those assets by making them discoverable and

self-served, by LoB IT and developers, while monitoring feedback and usage.

Central IT needs to move away from trying to deliver all IT projects themselves and start

building reusable assets to enable the business to deliver some of their own projects.

Figure 10. An IT operating model that emphasizes the consumption of assets by LoB IT and

developers as much as the production of these assets.

•The key to this strategy is to emphasize consumption as much as production

•Traditional IT approaches (for example, SOA) focused exclusively on production for the

delivery of projects

•In this operating model, IT changes its mindset to think about producing assets that will be

consumed by others in lines of business

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 17

•The assets need to be discoverable and developers need to be enabled to self-serve them in

projects

•The virtuous part of the cycle is to get active feedback from the consumption model along

with usage metrics to inform the production model

2.2.7. The modern API as a core enabler of this operating

model

In the proposed operating model, the organization packages-up core IT assets and capabilities

in the modern API.

The modern API is a product and it has its own software development lifecycle (SDLC)

consisting of design, test, build, manage, and versioning and it comes with thorough

documentation to enable its consumption.

•Modern APIs adhere to standards (typically HTTP and REST), that are developer-friendly,

easily accessible and understood broadly

•They are treated more like products than code

•They are designed for consumption for specific audiences (e.g., mobile developers)

•They are documented and versioned

•They are secured, governed, monitored and managed for performance and scale

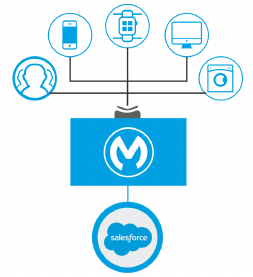

Figure 11. Visualization of how a modern API, productized with the API consumer in mind,

gives various types of API clients access to backend systems.

This approach is different from SOA in these respects:

•Modern APIs are easier to consume than WS-* web services

•SOA was built by IT and for the consumption by IT only

•The technology was hard: One can’t just give the extended organization access to WS-*

web services, they wouldn’t be able to use it

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 18

◦Not discoverable and consumable by broad developer teams within the ecosystem,

including mobile developers

•This left organizations with IT still being the bottleneck

•However, when an organization has a good SOA strategy in place, it will accelerate the

value of what API-led connectivity provides: after all, both SOA and API-led connectivity

revolve around services

2.2.8. The API-led connectivity approach

API-led connectivity is a methodical way to connect data to applications through a series of

reusable and purposeful modern APIs that are each developed to play a specific role – unlock

data from systems, compose data into processes, or deliver an experience.

API-led connectivity provides an approach for connecting and exposing assets through APIs. As

a result, these assets become discoverable through self-service without losing control.

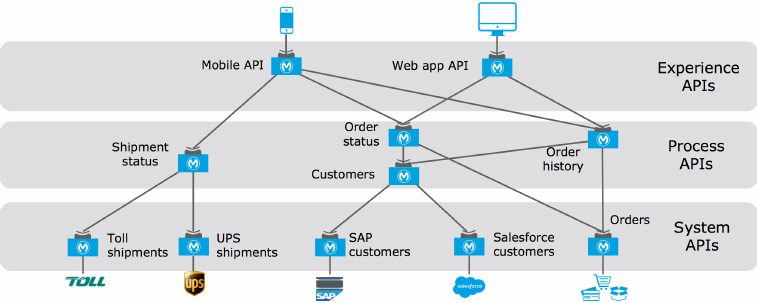

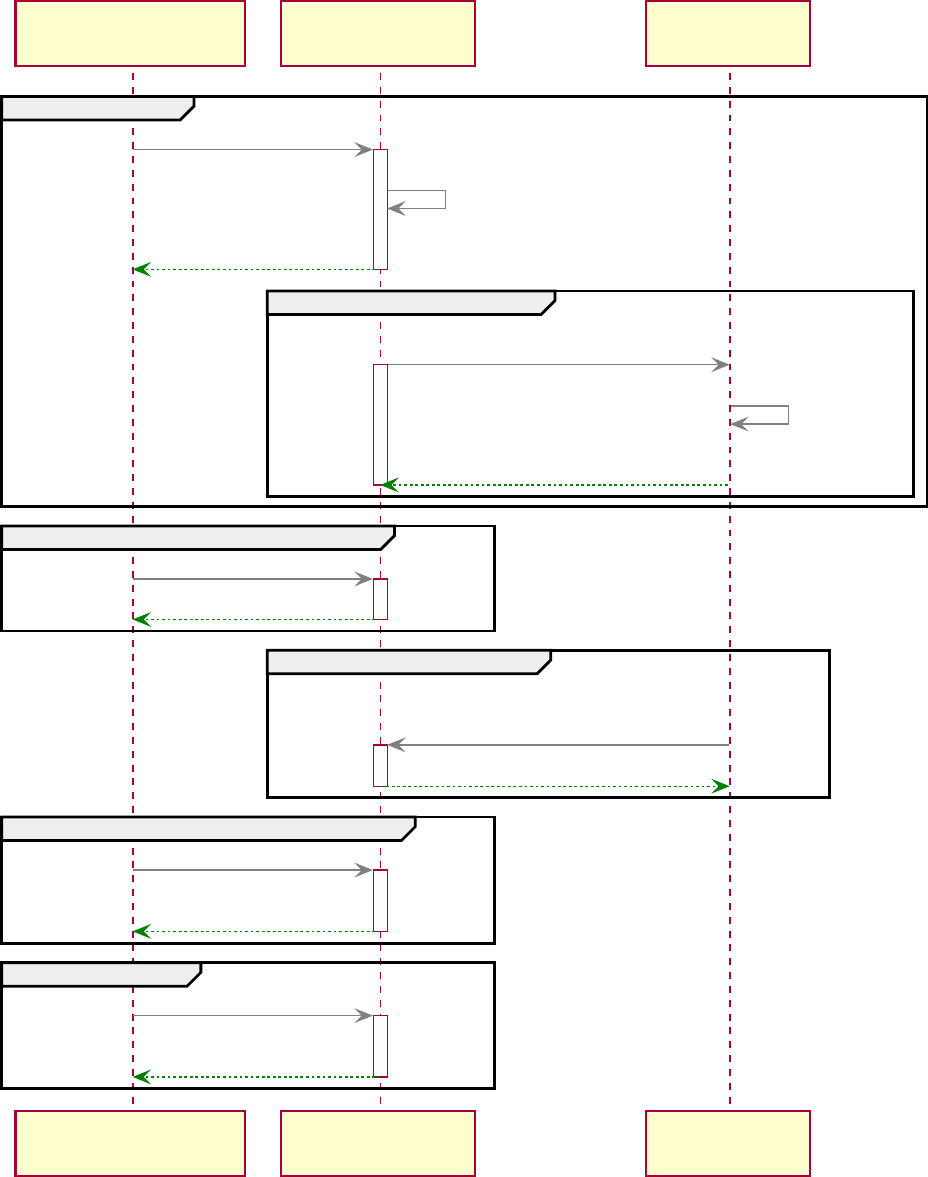

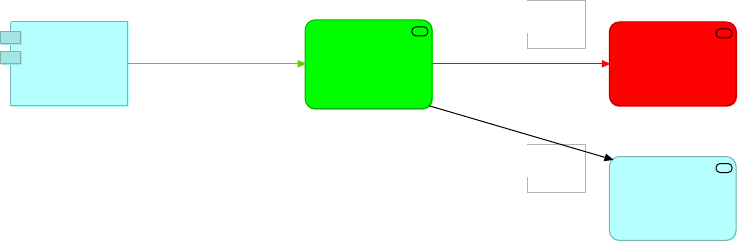

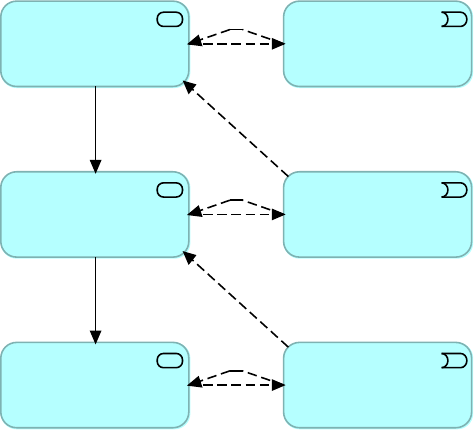

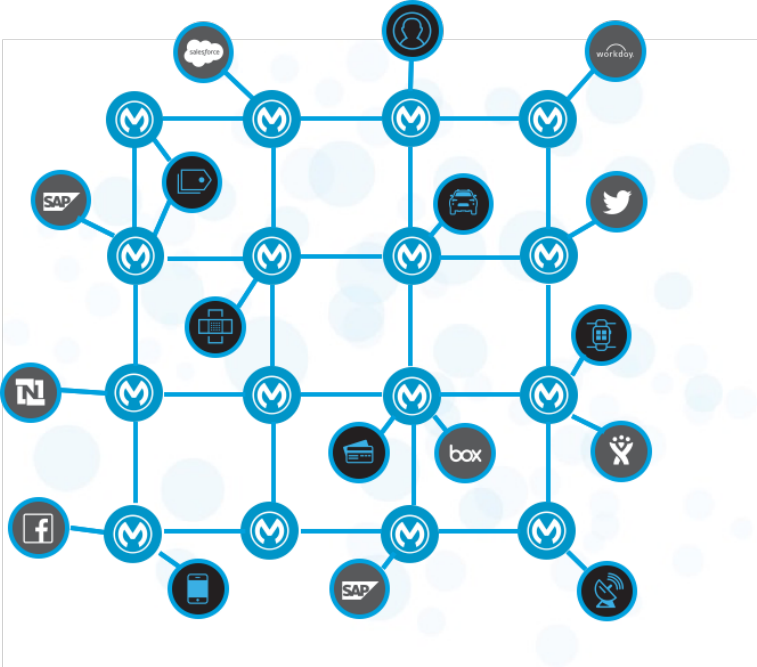

Figure 12. An example of an integration architecture following API-led connectivity principles

by assigning APIs to the tiers of System APIs, Process APIs and Experience APIs.

•System APIs: In the example, data from SAP, Salesforce and ecommerce systems is

unlocked by putting APIs in front of them. These form a System API tier, which provides

consistent, managed, and secure access to backend systems.

•Process APIs: Then, one builds on the System APIs by combining and streamlining

customer data from multiple sources into a "Customers" API (breaking down application

silos). These Process APIs take core assets and combines them with some business logic to

create a higher level of value. Importantly, these higher-level objects are now useful assets

that can be further reused, as they are APIs themselves.

•Experience APIs: Finally, an API is built that brings together the order status and history,

delivering the data specifically needed by the Web app. These are Experience APIs that are

designed specifically for consumption by a specific end-user app or device. These APIs allow

app developers to quickly innovate on projects by consuming the underlying assets without

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 19

having to know how the data got there. In fact, if anything changes to any of the systems

of processes underneath, it may not require any changes to the app itself.

With API-led connectivity, when tasked with a new mobile app, there are now reusable assets

to build on, eliminating a lot of work. It is now much easier to innovate.

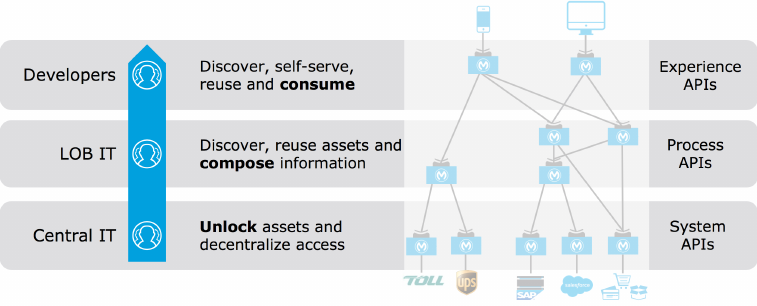

2.2.9. Focus and owners of APIs in different tiers

The organizational approach to API-led connectivity empowers the entire organization to

access their best capabilities in delivering applications and projects through modern APIs that

are developed by the teams that are best equipped to do so due to their roles and knowledge

of the systems they unlock, or the processes they compose, or the experience they’d like to

offer in the application.

•Central IT produces reusable assets, and in the process unlocks key systems, including

legacy applications, data sources, and SaaS apps. Decentralizes and democratizes access to

company data. These assets are created as part of the project delivery process, not as a

separate exercise.

•LOB IT and Central IT can then reuse these API assets and compose process level

information

•App developers can discover and self-serve on all of these reusable assets, creating the

experience-tier of APIs and ultimately the end-applications

It is critical to connect the three tiers as driving the production and consumption model with

reusable assets, which are discovered and self-served by downstream IT and developers.

Figure 13. The APIs in each of the three tiers of API-led connectivity have a specific focus and

are typically owned by different groups.

•API-led connectivity is not an architecture in itself, it is an approach to encourage to unlock

assets and drive reuse and self service

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 20

•API-led connectivity is not just about technology, but is a way to organize people and

processes for efficiencies within the organization

•The APIs depicted in those tiers are actually building blocks that encapsulate connectivity,

business logic, and an interface through which others interact. These building blocks are

productized, fully tested, automatically governed, and fully managed with policies.

•Easy publishing and discovery of APIs is crucial

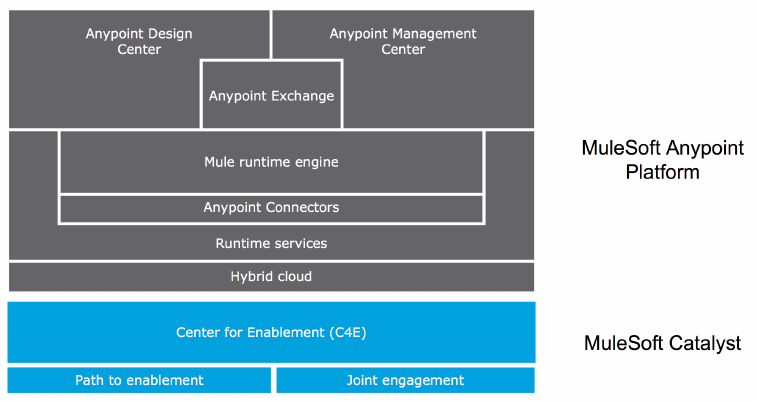

2.2.10. Anypoint Platform and organizational enablement

Figure 14. The components of Anypoint Platform sit on a foundation of organizational

enablement, of which the C4E is one important element. MuleSoft offers various engagement

models to help enable organizations.

2.2.11. Application landscape at the start of the journey

towards the application network

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 21

Figure 15. Isolated backend systems before the first project following API-led connectivity.

2.2.12. Every project adds value to the application network

Figure 16. Every project following API-led connectivity not only connects backend systems but

contributes reusable APIs and API-related assets to the application network.

2.2.13. The application network emerges

An application network is built ground-up over time and emerges as a powerful by-product of

API-led connectivity. See Figure 141.

The assets in an application network should be

•discoverable

•self-service

•consumable by the broader organization

Nodes in the application network create new business value.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 22

The application network is recomposable: it is built for change because it "bends but does not

break".

2.3. Highlighting important capabilities needed to

realize application networks

2.3.1. High-level technology delivery capabilities

To perform API-led connectivity an organization has to have a certain set of capabilities, some

of which are provided by Anypoint Platform:

•API design and development

◦i.e., the design of APIs and the development of API clients and API implementations

•API runtime execution and hosting

◦i.e., the deployment and execution of API clients and API implementations with certain

runtime characteristics

•API operations and management

◦i.e., operations and management of APIs and API policies, API implementations and API

invocations

•API consumer engagement

◦i.e., the engagement of developers of API clients and the management of the API clients

they develop

As was also mentioned in the context of OBD in 1.1.1, these capabilities are to be deployed in

such a way as to contribute to and be in alignment with the organization’s (strategic) goals,

drivers, outcomes, etc..

These technology delivery capabilities are furthermore used in the context of an (IT) operating

model that comprises various functions.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 23

API-led Connectivity Technology Delivery Capability

API design

and

development

API runtime

execution

and hosting

API operations

and

management

API consumer

engagement

Business Outcomes Alignment

Organisation IT-Business Strategy

Constraints

Requirements

Outcomes

Drivers

Principles

Operating Model

Delivery

Architecture

Governance

Security

Anypoint

Platform

Figure 17. A high-level view of the technology delivery capabilities provided by Anypoint

Platform in the context of various relevant aspects of the Business Architecture of an

organization.

2.3.2. Medium-level technology delivery capabilities

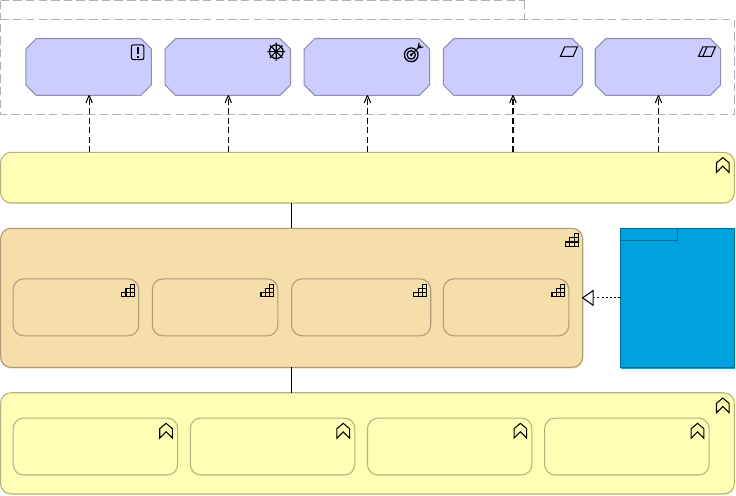

Figure 18 unpacks the technology delivery capabilities introduced at a high level in Figure 17.

Rather than going into a lot of detail now, it is best to just browse through these and revisit

them at the end of the course, matching what was discussed during the course against these

capabilities.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 24

API-led Connectivity Technology Delivery Capability

API design and

development

Development

Accelerators

API Specification

Design

API Implementation

Design

Artifacts Version

Control

API Testing,

Simulation and

Mocking

Automated Build

Pipeline

Reusable Assets

Discovery

API consumer

engagement

API Catalog and

Portals

API Actionable

Documentation

API Consumer and

Client On-boarding

API Client

Credentials

Management

API Analytics

relevant to

Consumer

API operations and

management

API Versioning

Runtime Analytics

and Monitoring

API Analytics

relevant to

Operator/Provider

API Policy

Configuration and

Management

API Policy Alerting,

Analytics and

Reporting

API Usage and

Discoverability

Analytics

API runtime execution and

hosting

API Proxy Runtime

Hosting

Runtime High-

availability and

Scalability

API Implementation

Runtime Hosting

Runtime High-

availability and

Scalability

Connectivity with

External Systems

Orchestration,

Routing and Flow

Control

Data

Transformation

Supporting

Capabilities

Software

Development

Process

Project

Management

Infrastructure

Operations

Business Outcomes Alignment

Operating Model

Anypoint

Platform

Figure 18. A medium-level drill-down into the technology delivery capabilities provided by

Anypoint Platform, and some generic supporting capabilities needed for performing API-led

connectivity.

2.3.3. Introducing important derived capabilities related to API

clients and API implementations

API clients and API implementations are application components. For application components,

the capabilities provided by Anypoint Platform enable the following important specific features,

properties and activities:

•Backend system integration

•Fault-tolerant API invocation

•HA and scalable execution

•Monitoring and alerting of API implementations and, if possible, API clients

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 25

Capabilities related to API clients and implementations

Backend System

Integration

Fault-Tolerant API

Invocation

HA and Scalable

Execution

Monitoring and

Alerting of API

Implementations

Figure 19. Important derived capabilities related to API clients and API implementations.

2.3.4. Introducing important derived capabilities related to

APIs and API invocations

For APIs and API invocations themselves, rather than for the underlying application

components that implement or invoke these APIs, the capabilities provided by Anypoint

Platform enable the following important specific features, properties and activities:

•API design

•API policy enforcement and alerting

•Monitoring and alerting of API invocations

•Analytics and reporting of API invocations, including the reporting on meeting of SLAs

•Discoverable assets for the consumption of anyone interested in the application network,

such as API consumers and API providers

•Engaging documentation, primarily for the consumption of API consumers

•Self-service API client registration for API consumers

Capabilities related to APIs and API invocations

API Design

API Policy

Enforcement and

Alerting

Monitoring and

Alerting of API

Invocations

Analytics and

Reporting of API

Invocations

Discoverable

Assets

Engaging

Documentation

Self-Service API

Client

Registration

Figure 20. Important derived capabilities related to APIs and API invocations.

2.4. Introducing Anypoint Platform

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 26

2.4.1. Revisiting Anypoint Platform components

For resources that introduce Anypoint Platform see Course prerequisites.

What follows is a brief recap of the components of Anypoint Platform:

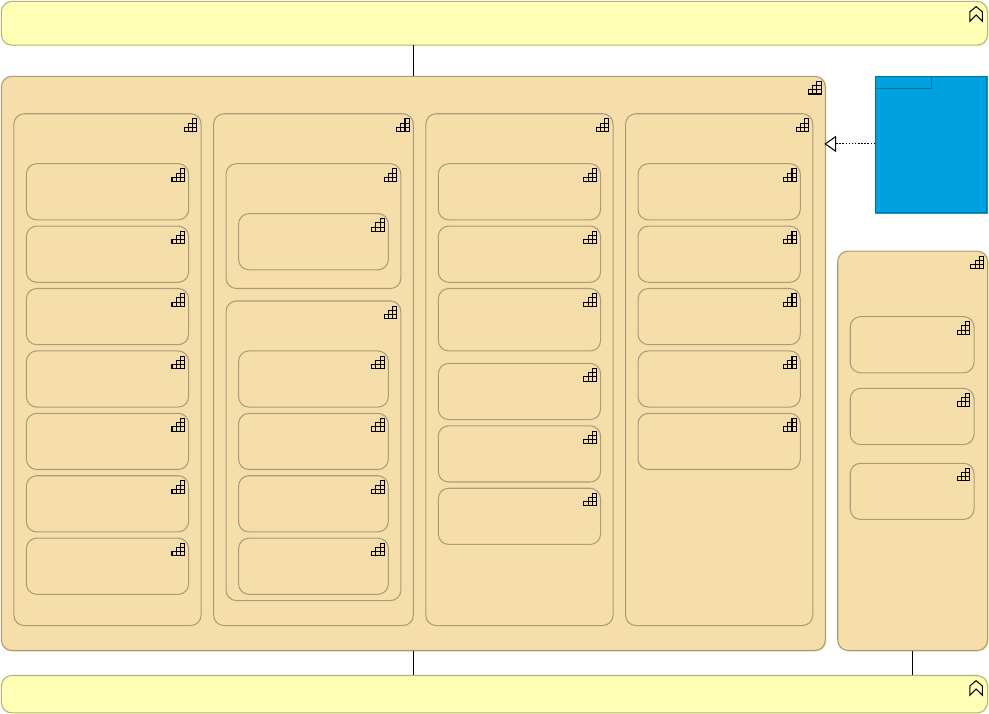

•Anypoint Design Center: Development tools to design and implement APIs, integrations and

connectors [Ref2]:

◦API designer

◦Flow designer

◦Anypoint Studio

◦Connector DevKit

◦APIKit

◦MUnit

◦RAML SDKs

•Anypoint Management Center: Single unified web interface for Anypoint Platform

administration:

◦Anypoint API Manager [Ref3]

◦Anypoint Runtime Manager [Ref5]

◦Anypoint Analytics [Ref7]

◦Anypoint Access management [Ref6]

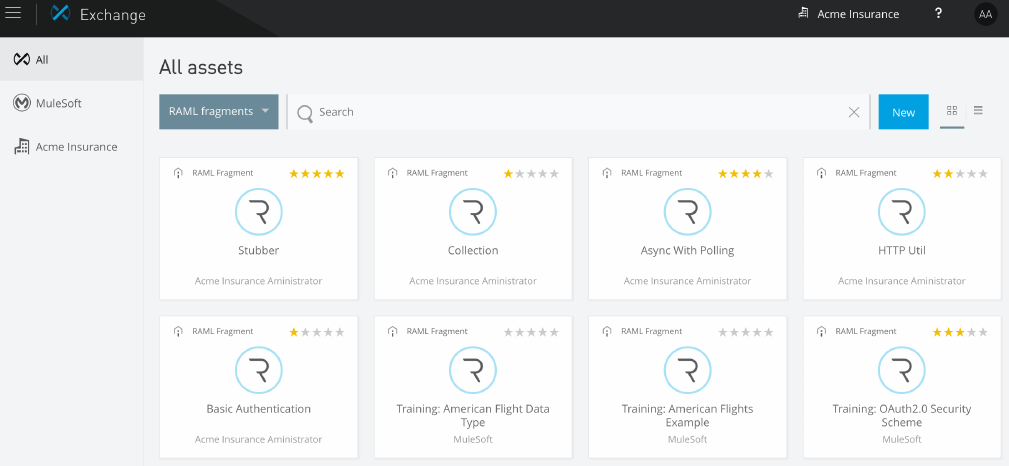

•Anypoint Exchange: Save and share reusable assets publicly or privately [Ref4]. Preloaded

content includes:

◦Anypoint Connectors

◦Anypoint Templates

◦Examples

◦WSDLs

◦RAML APIs

◦Developer Portals

•Mule runtime and Runtime services: Enterprise-grade security, scalability, reliability and

high availability:

◦Mule runtime [Ref1]

◦CloudHub

◦Anypoint MQ [Ref8]

◦Anypoint Enterprise Security

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 27

◦Anypoint Fabric

▪Worker Scaleout

▪Persistent VM Queues

◦Anypoint Virtual Private Cloud (VPC)

•Anypoint Connectors:

◦Connectivity to external systems

◦Dynamic connectivity to APIs

◦Build custom connectors using Connector DevKit/SDK

•Hybrid cloud

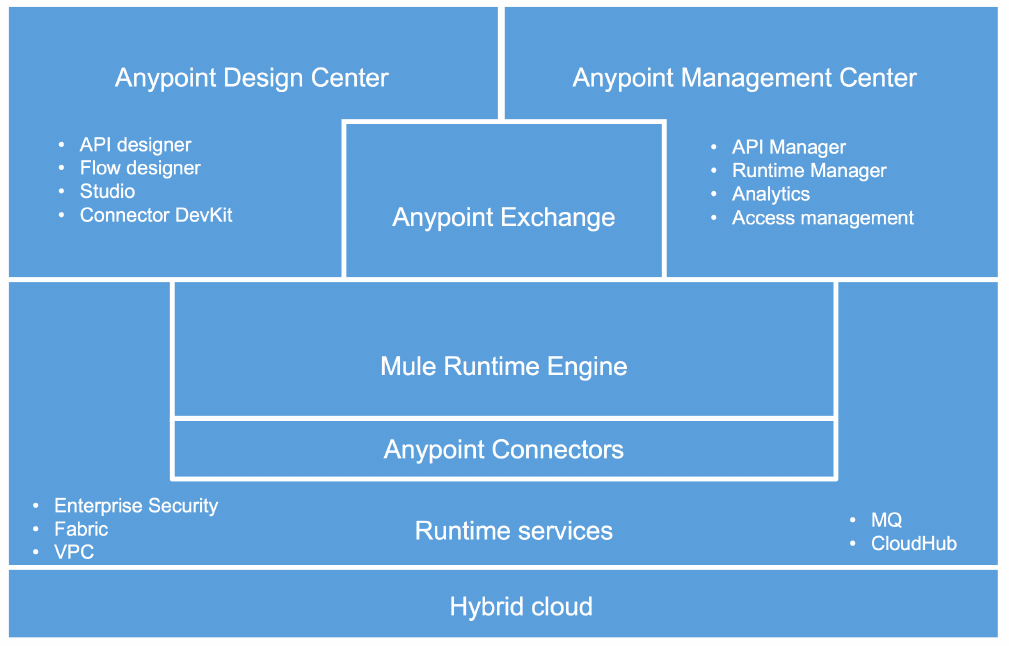

Figure 21. Anypoint Platform and its main components.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 28

Figure 22. Some of the Anypoint Connectors published in Anypoint Exchange.

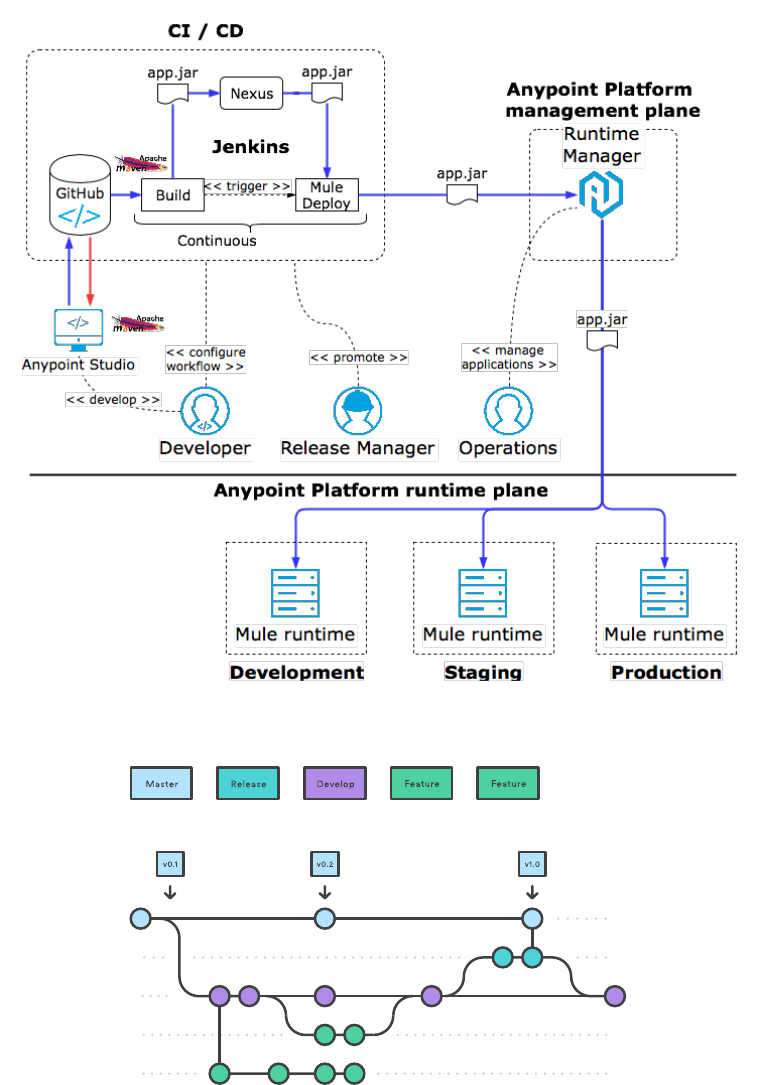

2.4.2. Understanding automation on Anypoint Platform

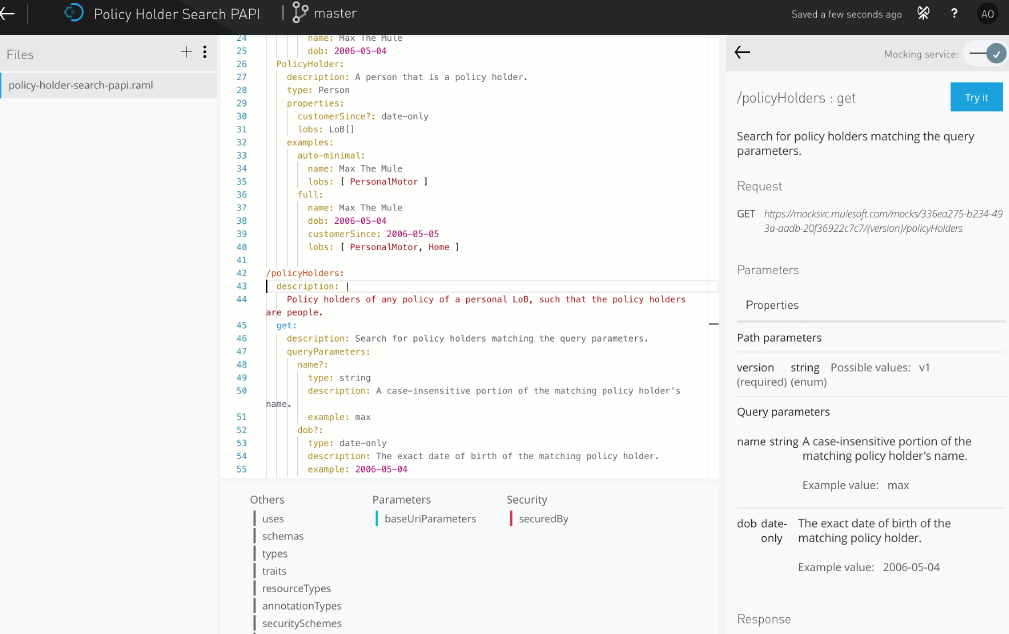

Anypoint Platform exposes a consolidated web UI for easy interaction with users of all levels of

expertise. Screenshots in this course are from that web UI.

All functionality exposed in the web UI is also available via Anypoint Platform APIs: these are

JSON REST APIs which are also invoked by the web UI. Anypoint Platform APIs enable

extensive automation of the interaction with Anypoint Platform.

MuleSoft also provides higher-level automation tools that capitalize on the presence of

Anypoint Platform APIs:

•Anypoint CLI, a command-line interface providing a user-friendly interactive layer on top of

Anypoint Platform APIs

•Mule Maven Plugin, a Maven plugin automating packaging and deployment of Mule

applications (including API implementations) to all kinds of Mule runtimes, typically used in

CI/CD (9.1.2)

Related to this discussion is the observation that Anypoint Exchange is also accessible as a

Maven repository. This means that a Maven POM can be configured to deploy artifacts into

Anypoint Exchange and retrieve artifacts from Anypoint Exchange, just like with any other

Maven repository (such as Nexus).

Summary

•MuleSoft’s mission is "To connect the world’s applications, data and devices to transform

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 29

business"

•MuleSoft proposes to close the increasing IT delivery gap through a consumption-oriented

operating model with modern APIs as the core enabler

•API-led connectivity defines tiers for Experience APIs, Process APIs and System APIs with

distinct stakeholders and focus

•An application network emerges from the repeated application of API-led connectivity and

stresses self-service consumption, visibility, governance and security

•Anypoint Platform provides the capabilities for realizing application networks

•Anypoint Platform consists of these high-level components: Anypoint Design Center,

Anypoint Management Center, Anypoint Exchange, Mule runtime, Anypoint Connectors,

Runtime services, Hybrid cloud

•Interaction with Anypoint Platform can be extensively automated

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 30

Module 3. Establishing Organizational and

Platform Foundations

Objectives

•Advise on establishing a C4E and identify KPIs to measure its success

•Choose between options for hosting Anypoint Platform and provisioning Mule runtimes

•Describe the set-up of organizational structure on Anypoint Platform

•Compare and contrast Identity Management and Client Management on Anypoint Platform

3.1. Establishing a Center for Enablement (C4E) at

Acme Insurance

3.1.1. Assessing Acme Insurance's integration capabilities

The New Owners contract a well-known management consultancy to assess Acme Insurance’s

capabilities to embark on the strategic initiatives introduced in Acme Insurance's motivation to

change. One focus of this assessment is Acme Insurance’s IT capabilities in general and

integration capabilities in particular. The findings are:

•LoBs (personal motor and home) have a long history of independence, also in IT

•LoBs have strong IT skills, medium integration skills but no know-how in API-led

connectivity or Anypoint Platform

•Acme IT is small but enthusiastic about application networks and API-led connectivity

•DevOps capabilities are present in LoB IT and Acme IT

•Corporate IT lacks the capacity and desire to involve themselves directly in Acme

Insurance’s Enterprise Architecture. All they care about is that corporate principles are

being followed, as summarized in Figure 3

3.1.2. A decentralized C4E for Acme Insurance

Based on the above analysis of Acme Insurance’s integration capabilities and organizational

characteristics, Acme Insurance and Corporate IT agree on the establishment of a C4E at

Acme Insurance with the following guiding principles:

enable

Enables LoBs to fulfil their integration needs

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 31

API-first

Uses API-led connectivity as the main architectural approach

asset-focused

Provides directly valuable assets rather than just documentation contributing to this goal

self-service

Assets are to be self-service consumed (initially) and (co-) created (ultimately) by the LoBs

reuse-driven

Assets are to be reused wherever applicable

federated

Follows a decentralized, federated operating model

The decentralized nature of the C4E matches well

•Acme Insurance’s division into LoBs

•the IT skills available in the LoBs

•Acme Insurance’s relationship with The New Owners

Remarks:

•The "enable" principle defines an Outcome-Based Delivery model (OBD) for the C4E

•The principles of "self-service", "reuse-driven" and "federated" promise increased

integration delivery speed

•Overall the C4E aims for a streamlined, lean engagement model, as also reflected in the

"asset-focused" principle

•This discussion omits questions of funding the C4E and whether C4E staff is fully or partially

assigned to the C4E

The following technical business roles are assigned to the C4E at the positions in their

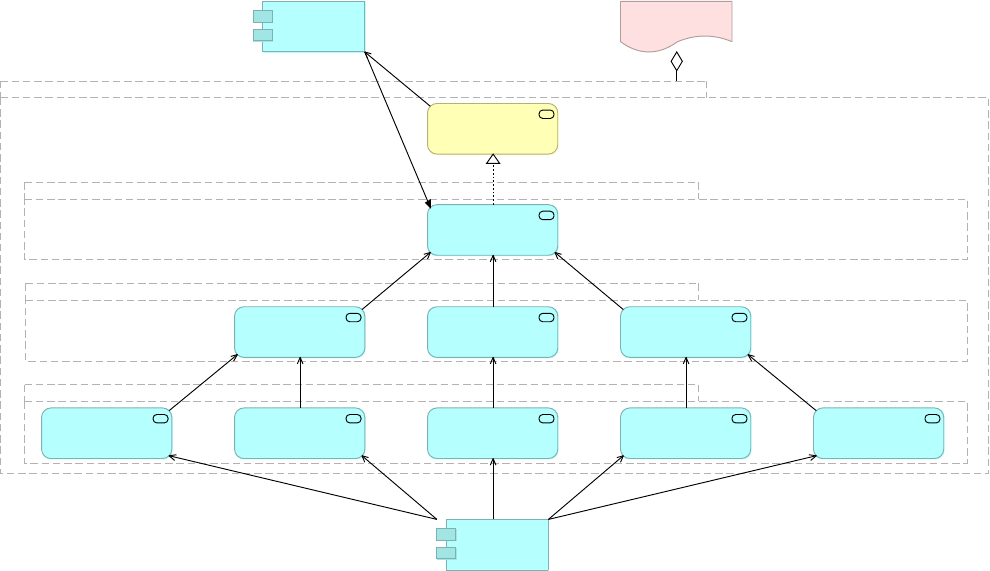

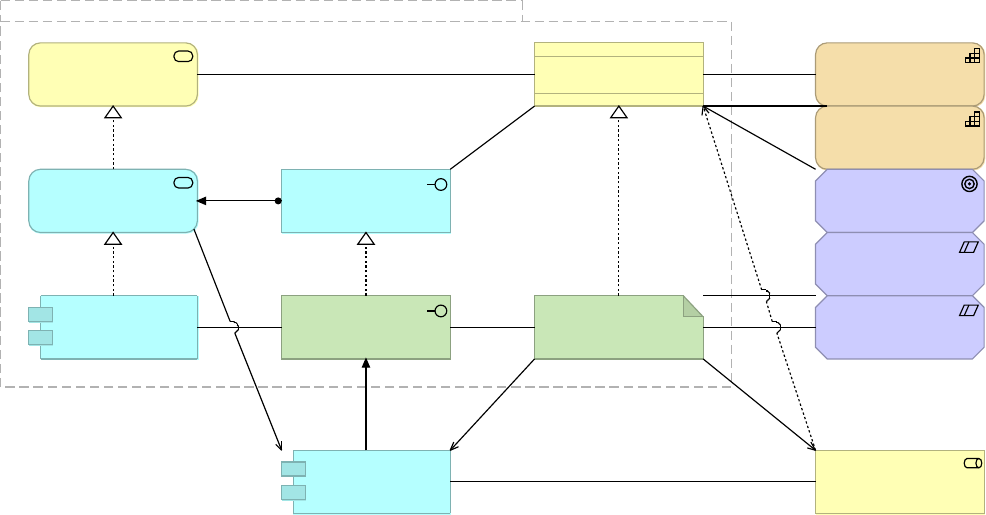

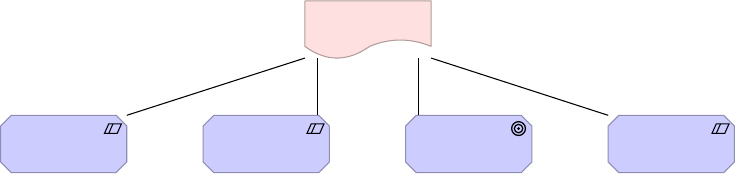

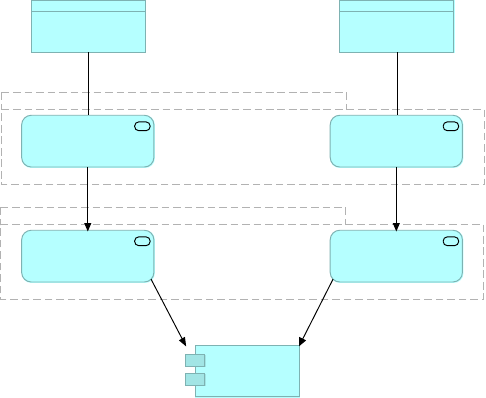

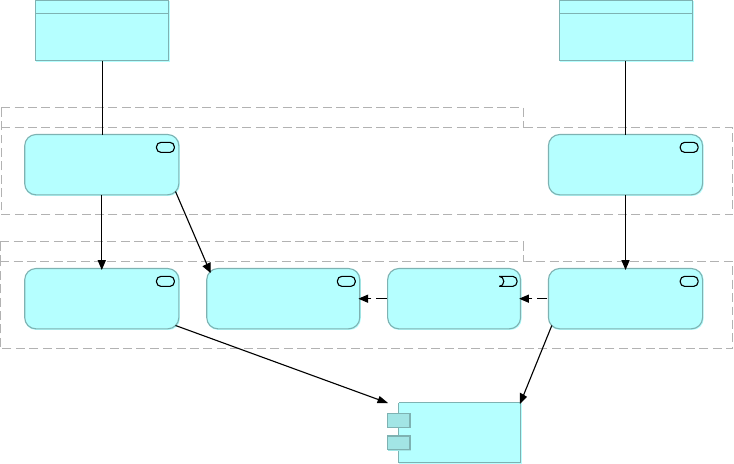

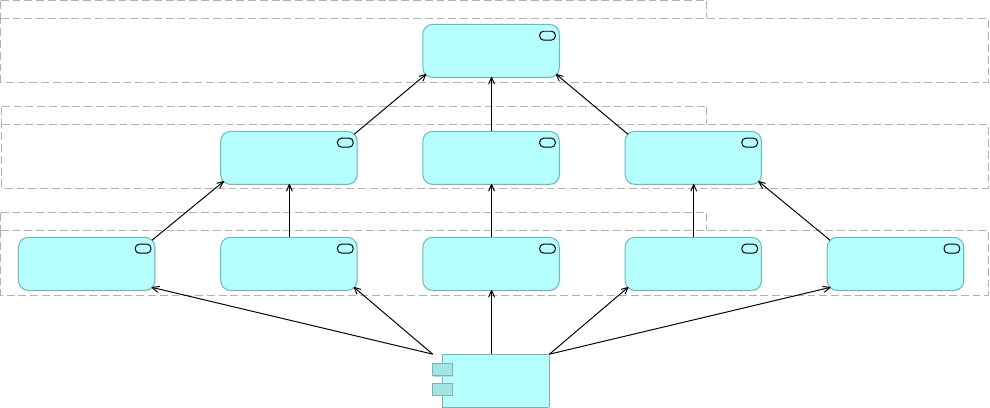

organizational structure as shown in Figure 23:

•Platform Architect:

◦Responsibilities: Platform vision and it’s evolution to meet tactical and strategic

objectives of the business

◦Profile: Solid understanding of the technical foundation of the platform; a technology

enthusiast with a strong track record of building and running high volume, reliable

architectures, preferably including cloud, As-A-Service infrastructure and applications

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 32

•API Architect, Integration Architect/Developer:

◦Responsibilities: Provides “enough” guidance over the design and operations of the C4E

assets; thought leadership, leading key stakeholders in designing and adopting API

architectures; architecting integrations solutions for C4E internal customers working

close with them through the whole lifecycle; gathering requirements from both the

business and technical teams to determine the integration solutions/APIs

◦Profile: Deep experience of integration and API architecture, thorough understanding of

industry trends, vendors, frameworks and practices

•DevOps Architect/Engineer

The New

Owners

Acme

Insurance HQ

Corporate HQ

Other

Acquisitions'

HQs

Motor

Underwriting

Home

Underwriting

Home Claims

Acme IT

Corporate IT

Personal

Motor LoB

Home LoB

Motor Claims

C4E

Personal

Motor LoB IT

Home LoB IT

LoB IT Project

Teams

Integration

Architect

API Architect

Integration

Dev

Integration

Architect

API Architect

Integration

Dev

LoB IT Project

Teams

Platform

Architect

API Architect

DevOps

Architect

Figure 23. Organizational view into Acme Insurance's target Business Architecture with a C4E

supporting LoBs and their project teams with their integration needs. Technical C4E business

roles are shown in blue, managerial C4E business roles are not shown.

3.1.3. Exercise 1: Measuring success of the C4E

Thinking back on the application network vision on the one hand, and the principles of Acme

Insurance’s' C4E on the other hand:

1. Compile a list of statements which, if largely true, allow the conclusion that the C4E is

successful

2. Compile a similar list that allows the conclusion that the application network vision is being

realized

3. From the above lists, extract a list of corresponding metrics

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 33

Solution

See 3.1.4.

3.1.4. Key Performance Indicators measuring the success of

Acme Insurance's C4E and the growth of its application

network

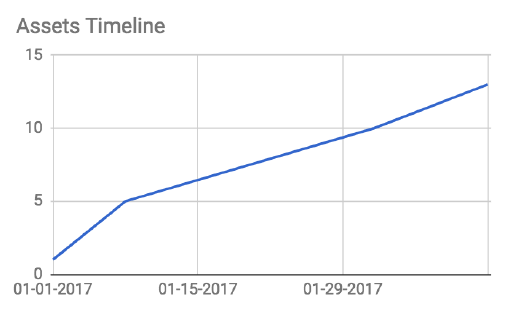

Acme Insurance uses the following key performance indicators (KPIs) to measure and track

the success of the C4E and its activities, as well as the growth and health of the application

network.

All of the metrics can be extracted automatically, through REST APIs, from Anypoint Platform.

•# of assets published to Anypoint Exchange

•# of interactions with Anypoint Exchange assets

•# of APIs managed by Anypoint Platform

•# of System APIs managed by Anypoint Platform

•# of API clients registered for access to APIs

•# of API implementations deployed to Anypoint Platform

•# of API invocations

•# or fraction of lines of code covered by automated tests in CI/CD pipeline

•Ratio of info/warning/critical alerts to number of API invocations

Figure 24. Number of assets published to Anypoint Exchange over time.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 34

Figure 25. Current number of assets published to Anypoint Exchange grouped by type of

asset.

3.2. Understanding Anypoint Platform deployment

scenarios

3.2.1. Separating Anypoint Platform control plane and runtime

plane

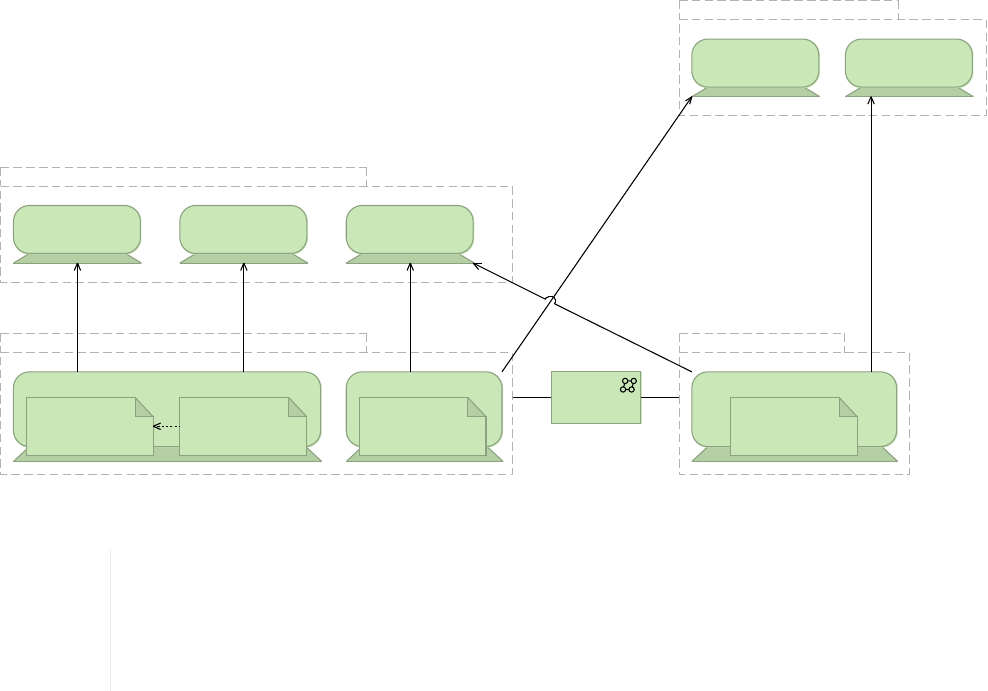

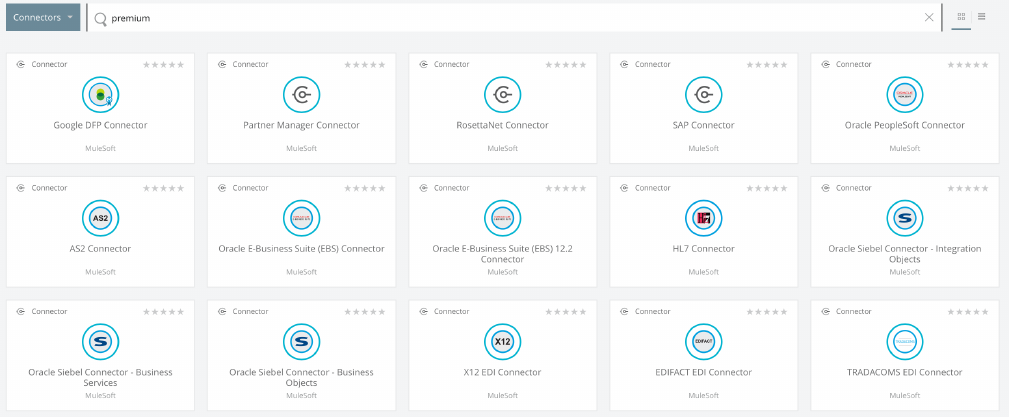

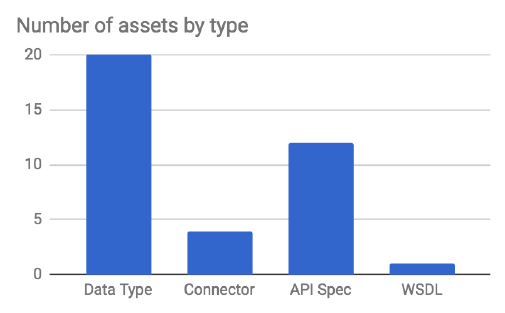

Anypoint Platform components (2.4.1) can be distinguished according to whether they are

directly involved in the execution of Mule applications or not:

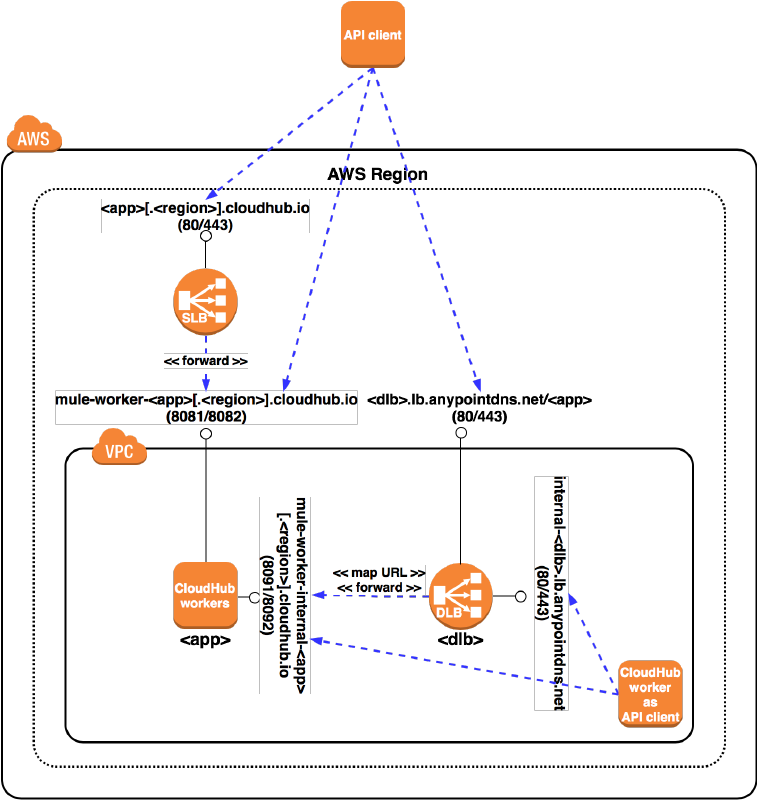

•The Anypoint Platform runtime plane comprises the Mule runtime itself, Anypoint

Connectors used by Mule applications executing in the Mule runtime, and all supporting

Runtime services, incl. Anypoint Enterprise Security, Anypoint Fabric, Anypoint VPCs,

CloudHub Dedicated Load Balancers, Object Store and Anypoint MQ. Simply put, the

runtime plane is where integration logic executes.

•The Anypoint Platform control plane comprises all Anypoint Platform components that are

involved in managing/controlling the components of the runtime plane (i.e., Anypoint

Management Center, incl. Anypoint API Manager and Anypoint Runtime Manager), the

design of APIs or Mule applications (i.e., Anypoint Design Center, incl. Anypoint Studio and

API designer) or the documentation and discovery of application network assets (i.e.,

Anypoint Exchange)

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 35

Figure 26. Components of the Anypoint Platform belong to either the control plane or the

runtime plane.

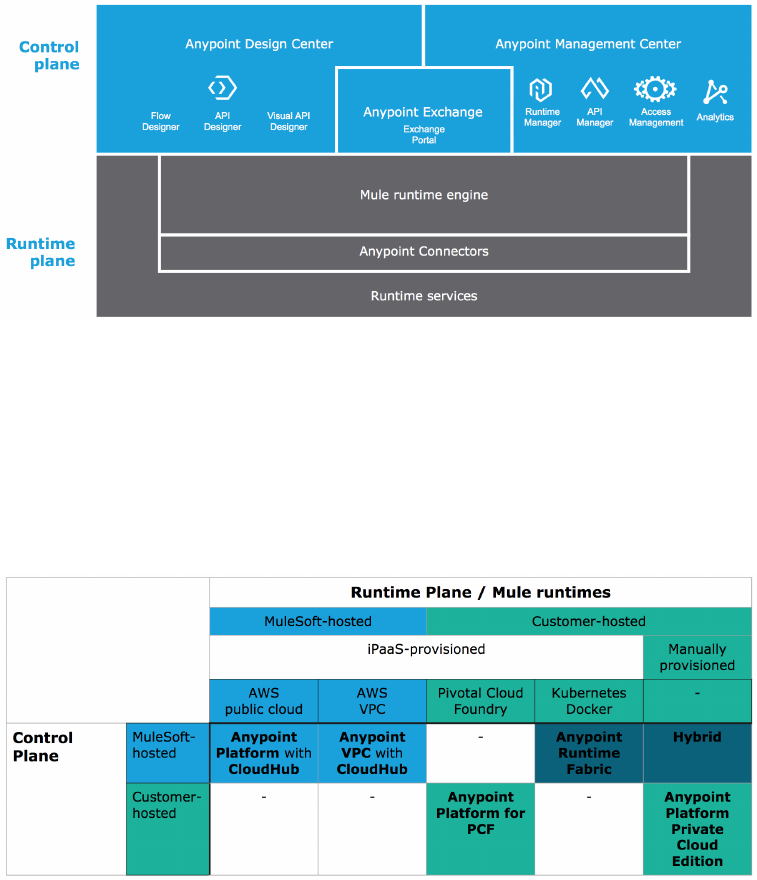

3.2.2. Introducing MuleSoft-hosted and customer-hosted

Anypoint Platform deployments

See [Ref5], [Ref9], [Ref10].

Figure 27. Overview of Anypoint Platform deployment options and MuleSoft product names (in

bold) for each supported scenario. (At the time of this writing, Anypoint Runtime Fabric is not

yet generally available.)

Options for the deployment of the Anypoint Platform control plane:

•MuleSoft-hosted:

◦Product: Anypoint Platform

◦AWS regions: US East (N Virginia, https://anypoint.mulesoft.com) or EU (Frankfurt,

https://eu1.anypoint.mulesoft.com)

•Customer-hosted:

◦Product: Anypoint Platform Private Cloud Edition

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 36

Options for the deployment of the Anypoint Platform runtime plane, incl. provisioning of Mule

runtimes:

•MuleSoft-hosted:

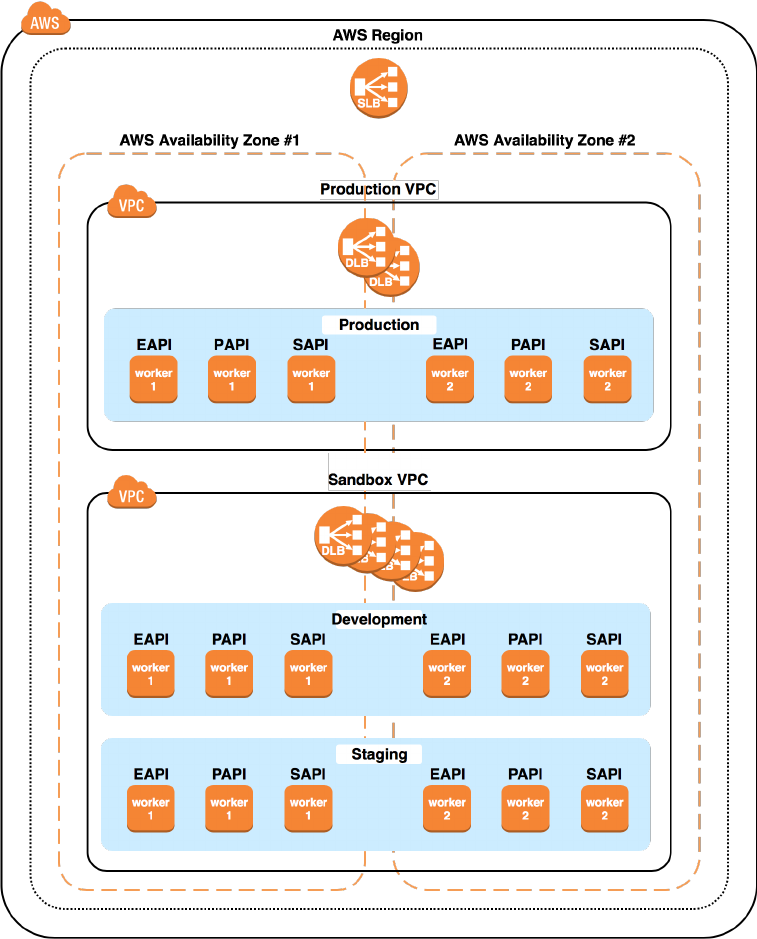

◦In the public AWS cloud: CloudHub

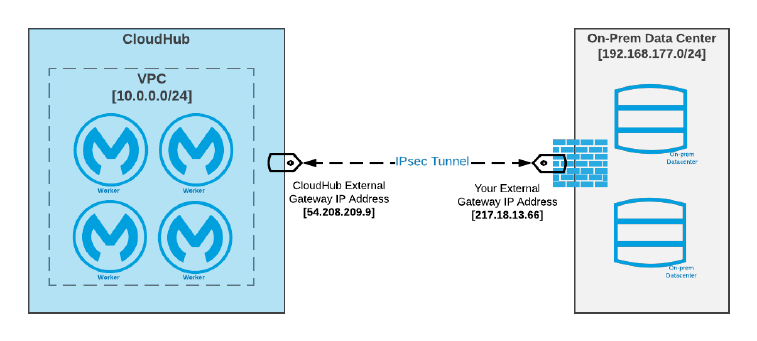

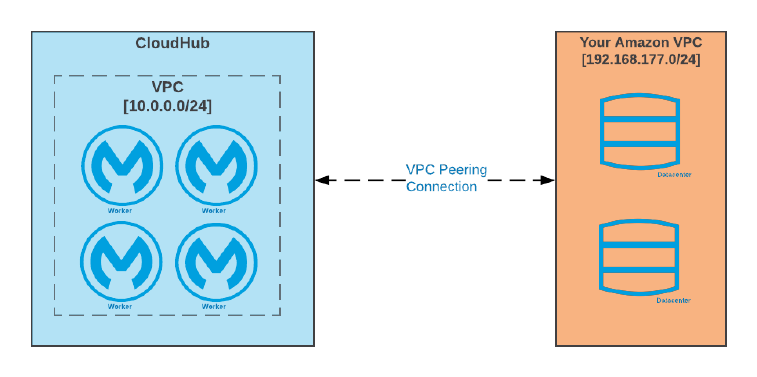

◦In an AWS VPC: CloudHub with Anypoint VPC

◦AWS regions:

▪Under management of the US East control plane: US East, US West, Canada, Asia

Pacific, EU (Frankfurt, Ireland, London, …), South America

▪Under management of the EU control plane: EU (Frankfurt and Ireland)

•Customer-hosted:

◦Manually provisioned Mule runtimes on bare metal, VMs, on-premises, in a public or

private cloud, …

◦iPaaS-provisioned Mule runtimes:

▪MuleSoft-provided software appliance: Anypoint Runtime Fabric

▪Customer-managed Pivotal Cloud Foundry installation: Anypoint Platform for Pivotal

Cloud Foundry

3.2.3. MuleSoft-hosted Anypoint Platform control plane and

runtime plane with iPaaS functionality in public cloud

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 37

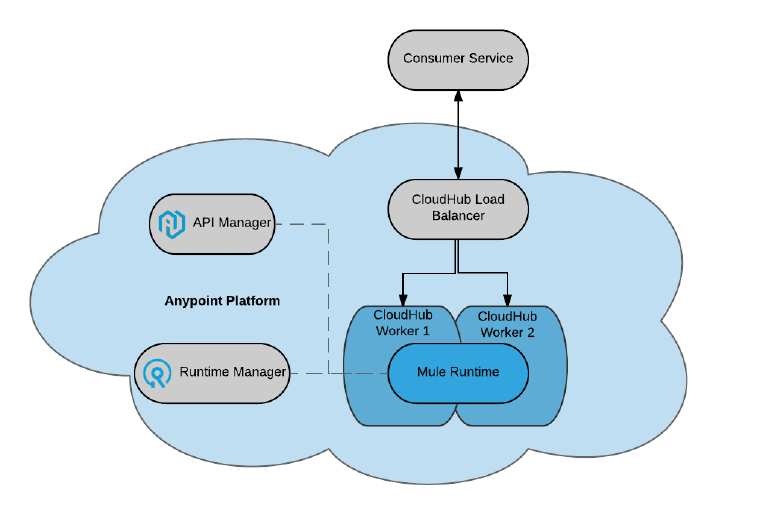

Figure 28. MuleSoft-hosted Anypoint Platform control plane managing MuleSoft-hosted

Anypoint Platform runtime plane with iPaaS-provisioned Mule runtimes on CloudHub in the

public AWS cloud.

3.2.4. MuleSoft-hosted Anypoint Platform control plane and

runtime plane with iPaaS functionality in Anypoint VPC

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 38

Figure 29. MuleSoft-hosted Anypoint Platform control plane managing MuleSoft-hosted

Anypoint Platform runtime plane with iPaaS-provisioned Mule runtimes on CloudHub in an

Anypoint VPC.

3.2.5. MuleSoft-hosted Anypoint Platform control plane and

customer-hosted runtime plane without iPaaS functionality

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 39

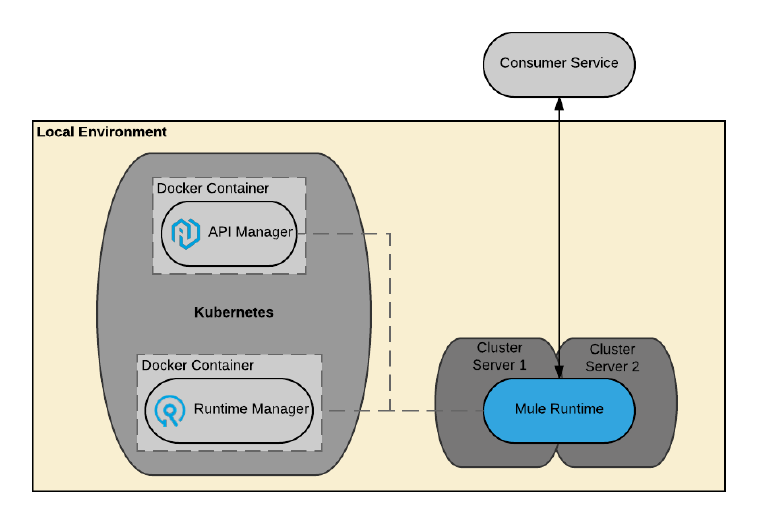

Figure 30. MuleSoft-hosted Anypoint Platform control plane managing customer-hosted

Anypoint Platform runtime plane with manually provisioned Mule runtimes.

3.2.6. Customer-hosted Anypoint Platform control plane and

runtime plane without iPaaS functionality

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 40

Figure 31. Anypoint Platform Private Cloud Edition: Customer-hosted Anypoint Platform control

plane managing customer-hosted Anypoint Platform runtime plane with manually provisioned

Mule runtimes.

3.2.7. Customer-hosted Anypoint Platform control plane and

runtime plane with iPaaS functionality on Pivotal Cloud Foundry

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 41

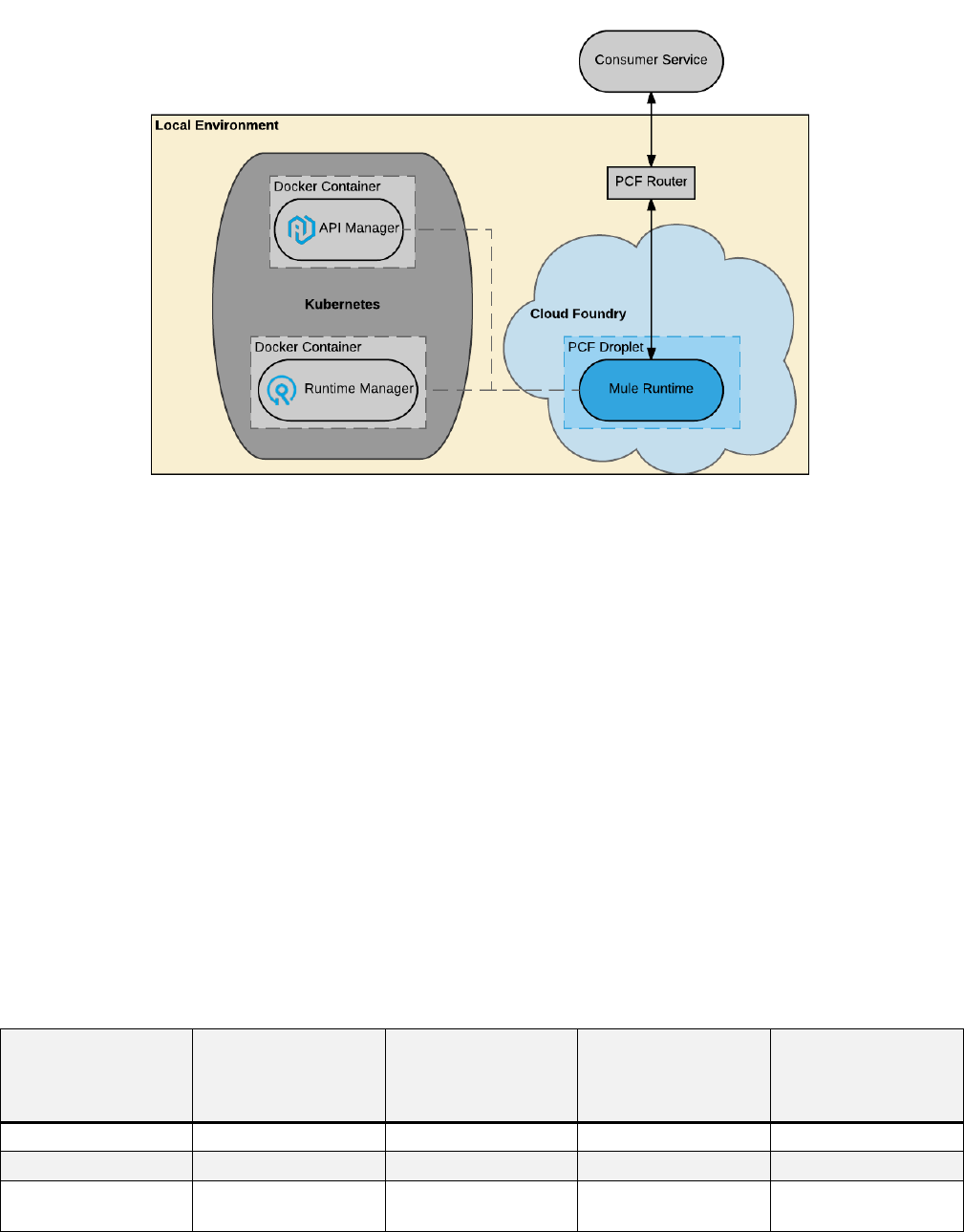

Figure 32. Anypoint Platform for Pivotal Cloud Foundry: Customer-hosted Anypoint Platform

control plane managing customer-hosted Anypoint Platform runtime plane with iPaaS-

provisioned Mule runtimes on PCF.

3.2.8. Anypoint Platform differences between deployment

scenarios

Anypoint Platform deployment options differ in various ways:

•At the highest level, not all Anypoint Platform components are currently available for every

Anypoint Platform deployment scenario.

•Some features of Anypoint Platform components that are available in more than one

deployment scenario differ in their details, typically due to the different technical

characteristics and capabilities available in each case.

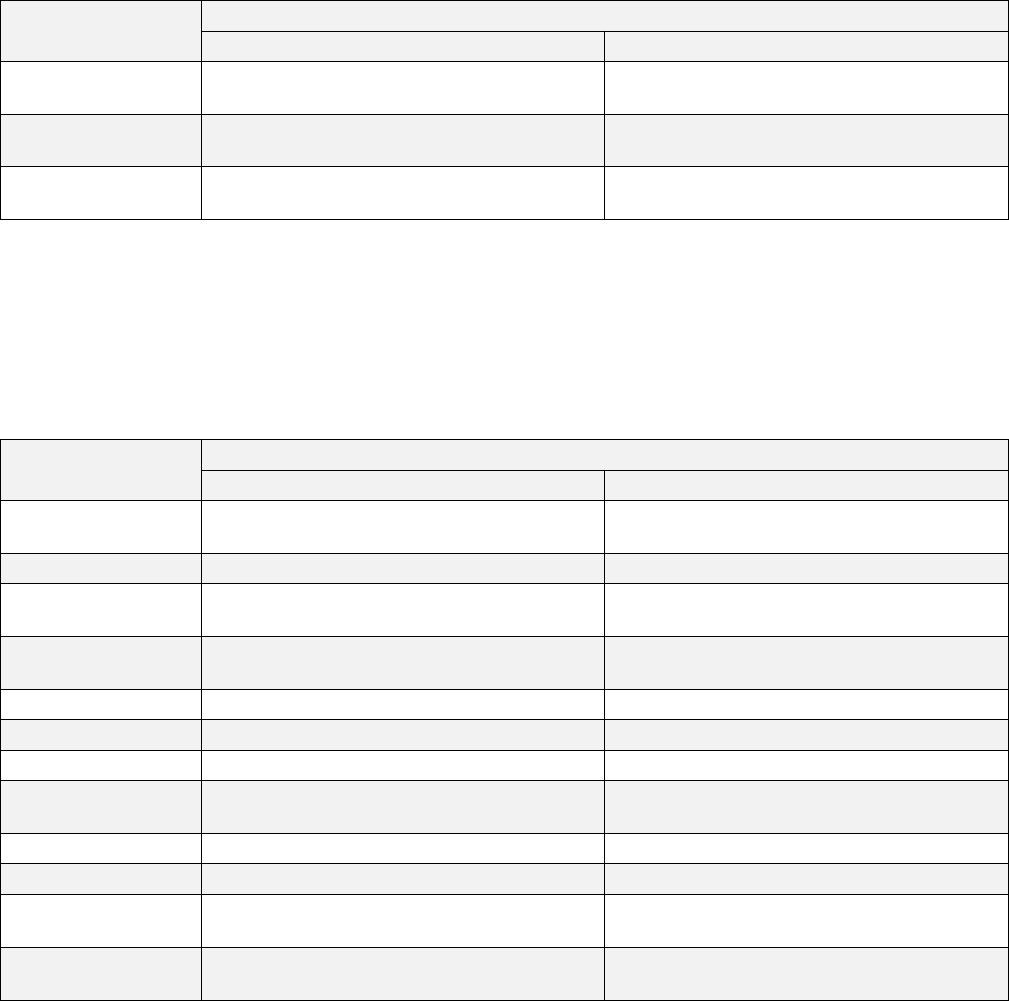

Table 1. Availability of Anypoint Platform components and high-level features in different

deployment scenarios.

Component,

Feature

MuleSoft-hosted

Anypoint

Platform

Hybrid Anypoint

Platform Private

Cloud Edition

Anypoint

Platform for

Pivotal Cloud

Foundry

API designer yes yes yes yes

Flow designer yes yes no no

Anypoint Access

management

yes yes yes yes

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 42

Component,

Feature

MuleSoft-hosted

Anypoint

Platform

Hybrid Anypoint

Platform Private

Cloud Edition

Anypoint

Platform for

Pivotal Cloud

Foundry

LDAP for Identity

Management

no no yes yes

Anypoint

Runtime

Manager

yes yes yes yes

External Runtime

Analytics

no yes yes yes

Schedules UI yes yes yes yes

Anypoint Runtime

Manager

monitoring

dashboards

yes yes no no

Insight yes yes no no

Anypoint Runtime

Manager alerts

yes yes no no

Anypoint API

Manager

yes yes yes yes

Anypoint API

Manager alerts

yes yes no no

Anypoint

Analytics

yes yes no no

External API

Analytics

no yes yes yes

Anypoint

Exchange

yes yes yes yes

Anypoint MQ yes yes no no

iPaaS yes (CloudHub) no no yes

Mule runtime load

balancing

yes (Fabric) no no yes (PCF)

Mule runtime

persistent VM

queues

yes (Fabric) yes yes yes

Mule runtime

auto-restart

yes no no yes

Mule runtime

cluster

no yes yes yes

Zero downtime

deployments

yes no no yes

Notes:

•External Analytics refers to Splunk, ELK or similar software

•Persistent VM queues in a non-CloudHub deployment scenario have many more options,

such as cluster-wide in-memory replication or persistence on disk or in a database, but may

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 43

require management of persistent storage

•The Tanuki Software Java Service Wrapper offers limited auto-restart capabilities also for

customer-managed Mule runtimes, but this is not comparable to CloudHub’s, Anypoint

Runtime Fabric’s or PCF’s sophisticated health-check-based auto-restart feature

•Anypoint Runtime Manager alerts on CloudHub (and only on CloudHub) also include the

option of custom alerts and notifications (via the CloudHub connector)

•Anypoint Platform Private Cloud Edition supports minimal Anypoint Runtime Manager alerts

related to the Mule application being deployed, undeployed, etc., but no alerts based on

Mule events or API events

•Anypoint Runtime Manager monitoring dashboards include load and performance metrics

for Mule applications and the servers to which Mule runtimes are deployed

•Insight is a troubleshooting tool that gives in-depth visibility into Mule applications by

collecting Mule events (business events and default events) and the transactions to which

these events belong. Only CloudHub supports the replay of transactions (which causes re-

processing the involved message)

•Clustering Mule runtimes when deploying through Anypoint Platform for Pivotal Cloud

Foundry requires a PCF Hazelcast service. Mule runtime clusters in Hybrid and Anypoint

Platform Private Cloud Edition deployment scenarios only need and use the Hazelcast

features built into the Mule runtime itself.

3.2.9. Exercise 2: Choosing between deployment scenarios

Reflecting on the various deployment scenarios supported by Anypoint Platform:

1. Discuss the characteristics of each scenarios

2. For each deployment scenario, identify requirements that would clearly require that

scenario

Solution

Anypoint Platform deployment scenarios can be evaluated along the following dimensions:

•Regulatory or IT operations requirements that mandate on-premises processing of every

data item, including meta-data about API invocations and messages processed within Mule

applications: requires Anypoint Platform Private Cloud Edition or Anypoint Platform for

Pivotal Cloud Foundry

•Time-to-market, assuming the effort to deploy Anypoint Platform must be included in the

elapsed time: favors MuleSoft-hosted Anypoint Platform

•IT operations effort: favors MuleSoft-hosted Anypoint Platform over all other deployment

scenarios; favors Anypoint Runtime Fabric over Anypoint Platform for Pivotal Cloud Foundry

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 44

over Anypoint Platform Private Cloud Edition

•Latency and throughput when accessing on-premises data sources: favors scenarios where

Mule runtimes can be deployed close to these data sources, i.e., Anypoint Runtime Fabric,

Anypoint Platform Private Cloud Edition and Anypoint Platform for Pivotal Cloud Foundry

over CloudHub with geographically close runtime plane

•Isolation between Mule applications: favors scenarios where each Mule application is

assigned to its own Mule runtime; favors bare metal over VMs over containers for Mule

runtimes

•Control over Mule runtime characteristics like JVM and machine memory, garbage collection

settings, hardware, etc.: favors Hybrid and Anypoint Platform Private Cloud Edition over

Anypoint Platform for Pivotal Cloud Foundry over MuleSoft-hosted Anypoint Platform

•Scalability of runtime plane: consider horizontal and vertical scaling; consider static and

dynamic (load-based, automatic) scaling; favors cloud-deployments of runtime plane,

including CloudHub; favors iPaaS functionality over manually provisioned Mule runtimes

•Roll-out of new releases: continuously (weekly) in the MuleSoft-hosted control plane versus

quarterly releases of Anypoint Platform Private Cloud Edition

3.2.10. Anypoint Platform data residency

The deployment scenarios supported by Anypoint Platform cover a large number of options of

who (MuleSoft or the customer) controls and manages the various components of Anypoint

Platform, where these components reside, and where Mule applications execute as a result. As

Mule applications execute integration logic, data flows through the various systems of Anypoint

Platform and beyond. This section describes in detail where what kind of data is sent and

stored. This is particularly relevant in the light of various regulatory (legal) requirements such

GDPR (https://www.eugdpr.org) and the Patriot Act.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 45

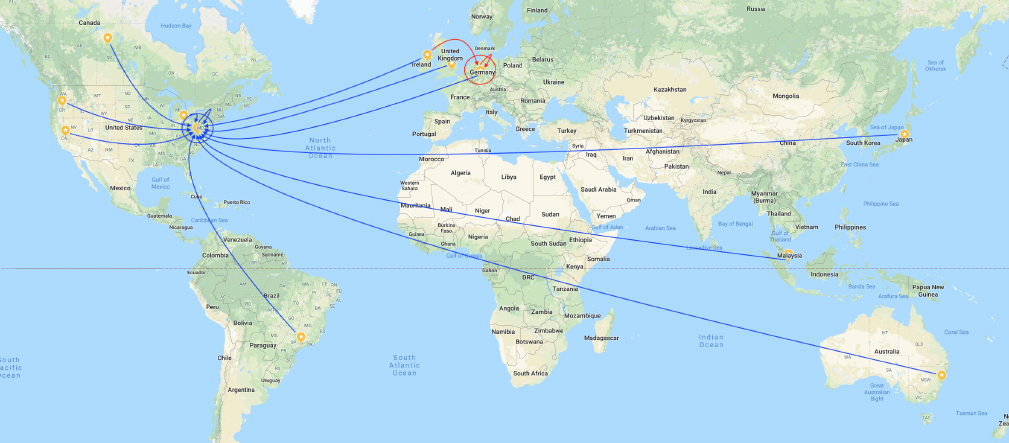

Figure 33. AWS regions in which MuleSoft hosts the Anypoint Platform control plane (large

colored circles) and runtime plane (small yellow marks). Data resides within the runtime

plane, while limited amounts of metadata are sent from there to the managing control plane

(arrows in the color of the managing control plane).

The fundamental principle is that the location of Mule runtimes, together with the integration

logic implemented by Mule applications executing in these Mule runtimes, determine the

location and residency of all data. In addition to the data itself, limited amounts of metadata

and metrics are exchanged with the Anypoint Management Center components of the Anypoint

Platform control plane. Specifically:

•Mule runtimes and Mule applications can either be managed entirely by the customer or

execute in one of the AWS regions supported by CloudHub as described in 3.2.2: this

determines where all data, in the form of Mule messages, is processed.

•Mule applications can make use of Object Store, persistent VM queues or other resilience

features. In a CloudHub deployment, all of these features store data in the same AWS

region as the Mule runtime itself. For customer-managed deployments the customer fully

controls where Mule applications send or store data.

•Mule applications may also make use of Anypoint MQ (8.3.1): Anypoint MQ

queues/exchanges are explicitly created to reside in an AWS region. The available regions

are typically those of the Anypoint Platform runtime plane.

•Metadata, incl. metrics, about Mule messages and API invocations is sent by the Mule

runtime to Anypoint Management Center (10.1.1). Thus by selecting either a customer-

hosted control plane, or one of the supported AWS regions of the MuleSoft-hosted Anypoint

Platform (3.2.2), customers determine where this metadata is sent.

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 46

◦Example of metadata: CPU/memory usage, message/error count, API name and version,

geodata about the API client, HTTP method, violated API policy name, etc. (10.2.1)

•Logs produces by Mule applications deployed to CloudHub have residency characteristics

similarly to those of metadata.

•Mule applications may produce business events and Anypoint Runtime Manager may use

the Insight feature to analyze and replay business events. Depending on the details of the

configuration, events may also include the data (payload) of the Mule message, although

this is not the case by default. If this feature is chosen, then message data (and not just

metadata) is sent to Anypoint Runtime Manager.

•Mule applications are typically deployed to Mule runtimes using Anypoint Runtime Manager:

this means that the Mule applications themselves, incl. all code and resources packaged

within the Mule applications, are stored where the Anypoint Platform control plane resides.

These rules are typically applied as follows to meet regulatory requirements through

jurisdiction-local deployments (assuming suitably implemented Mule applications):

•A combination of the MuleSoft-hosted Anypoint Platform EU/US control plane and a

matching MuleSoft-hosted EU/US runtime plane (i.e., using CloudHub and Anypoint MQ in a

matching AWS region) keeps all data and metadata in the EU/US.

•A combination of the MuleSoft-hosted Anypoint Platform EU/US control plane and a

customer-hosted runtime plane (i.e., Hybrid deployment , optionally with Anypoint Runtime

Fabric) keeps all data on customer-hosted infrastructure and all metadata in the EU/US.

•A combination of a customer-hosted Anypoint Platform control plane and a matching

customer-hosted runtime plane (i.e., using Anypoint Platform Private Cloud Edition or

Anypoint Platform for Pivotal Cloud Foundry) keeps all data and metadata on customer-

hosted infrastructure.

3.3. Onboarding Acme Insurance onto Anypoint

Platform

3.3.1. Anypoint Access management

•Controls access to entitlement areas in Anypoint Platform

•Manage

◦Business groups, users, roles and permissions

◦Environments

◦Other Resources

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 47

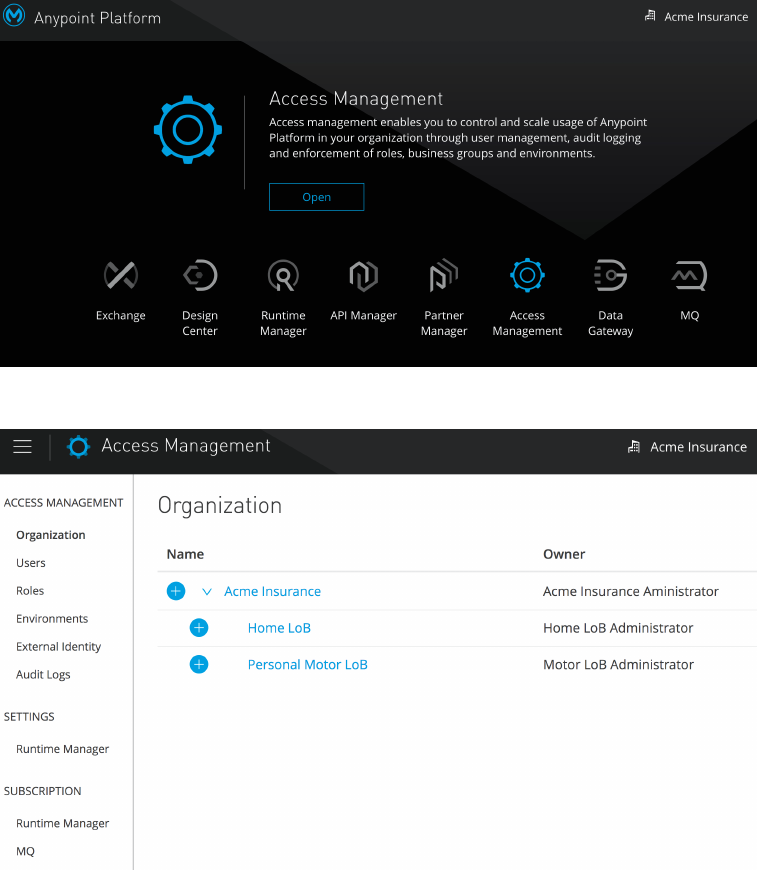

Figure 34. Anypoint Access management and the Anypoint Platform entitlements.

Figure 35. Anypoint Access management controls access to and allocation of various resources

on Anypoint Platform.

3.3.2. Anypoint Platform organizations and business groups

•Organization: An administrative collection of resources and users

•Business group: A sub-organization at any level

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 48

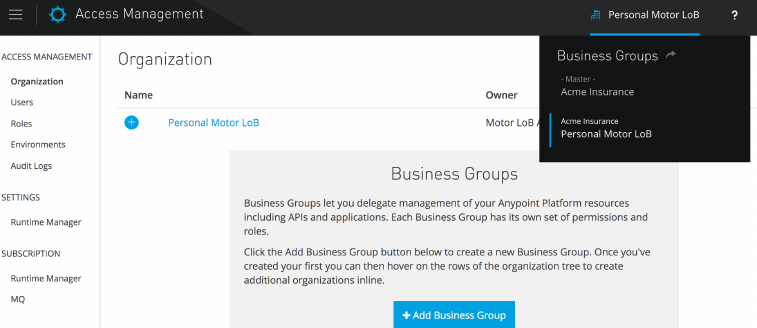

Figure 36. Anypoint Access management at the level of the Personal Motor LoB business

group.

3.3.3. Identity Management vs Client Management in Anypoint

Platform

•Identity Management is concerned with users of Anypoint Platform

◦includes users of the Anypoint Platform web UI and the Anypoint Platform APIs

◦enables Single Sign-On (SSO)

•Client Management is concerned with API clients using OAuth 2.0

By default, Anypoint Platform acts as an Identity Provider for Identity Management. But

Anypoint Platform also supports configuring one external Identity Provider for each of these

two uses, independently of each other.

If an external Identity Provider is configured for Identity Management, then SAML 2.0 bearer

tokens issued by that Identity Provider can be used for invocations of the Anypoint Platform

APIs. Optionally, before configuring an external Identity Provider for Identity Management,

setup administrative users for invoking the Anypoint Platform APIs. These will remain valid

after the external Identity Provider has been configured.

3.3.4. Supported Identity Provider standards and products

For Identity Management, Anypoint Platform supports:

•The mapping of Anypoint Platform roles to groups in an external Identity Provider

•OpenID Connect

◦a standard implemented by Identity Providers such as PingFederate, OpenAM, Okta

◦does not support single log-out

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 49

•SAML 2.0

◦a standard implemented by Identity Providers such as PingFederate, OpenAM, Okta,

Shibboleth, Active Directory Federation Services (AD FS), onelogin, CA Single Sign-On

◦supports single log-out

•LDAP

◦a standard that is supported for Identity Management only on Anypoint Platform Private

Cloud Edition

For Client Management, Anypoint Platform supports the following Identity Providers as OAuth

2.0 servers:

•OpenAM

•PingFederate

•OpenID Connect Dynamic Client Registration (DCR)

◦a standard implemented by Identity Providers such as Okta and OpenAM

3.3.5. Selecting an Identity Provider for Acme Insurance

Acme Insurance currently uses Microsoft Active Directory (AD) to store user accounts.

After a brief evaluation period Acme Insurance chooses PingFederate as an Identity Provider

ontop of AD. They configure their Anypoint Platform organization in the MuleSoft-hosted

Anypoint Platform to access their on-premises PingFederate instance for Identity Management.

Acme Insurance is currently unsure whether they will need OAuth 2.0, but if they do, they plan

to use the same PingFederate instance also for Client Management.

Summary

•A federated C4E is established at Acme Insurance to facilitate API-led connectivity and the

growth of an application network

◦Federation plays to the strength of Acme Insurance’s LoB IT

◦KPIs to measure the C4E’s success are defined and monitored

•Anypoint Platform control plane and runtime plane can both be hosted by MuleSoft or

customers

•Mule runtimes can be provisioned manually or through iPaaS functionality

•iPaaS-provisioning of Mule runtimes is supported via CloudHub, Anypoint Platform for

Pivotal Cloud Foundry and Anypoint Runtime Fabric

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 50

•Not all Anypoint Platform components are available in all deployment scenarios

•Acme Insurance and its LoBs and users are onboarded onto Anypoint Platform using an

external Identity Provider

•Identity Management and Client Management are clearly distinct functional areas, both

supported by Identity Providers

Anypoint Platform Architecture Application Networks

© 2018 MuleSoft Inc. 51

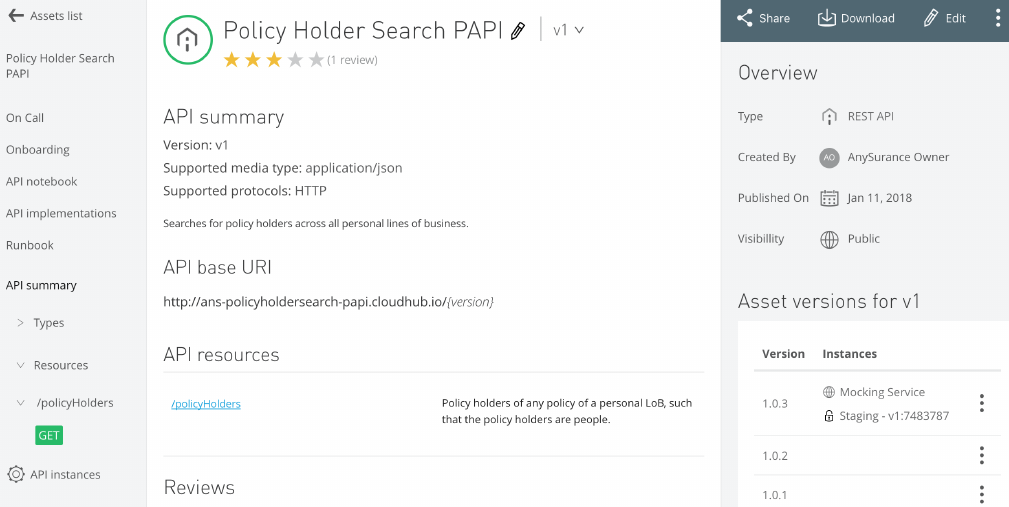

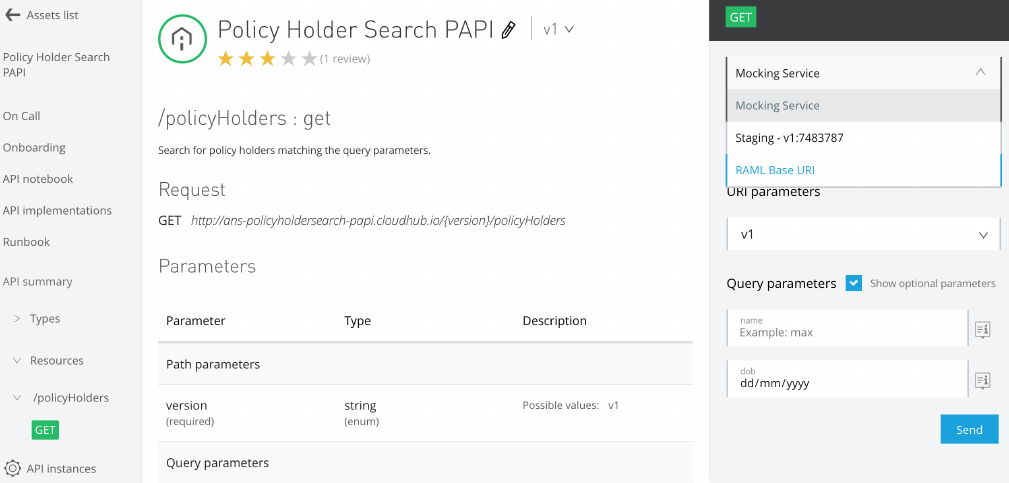

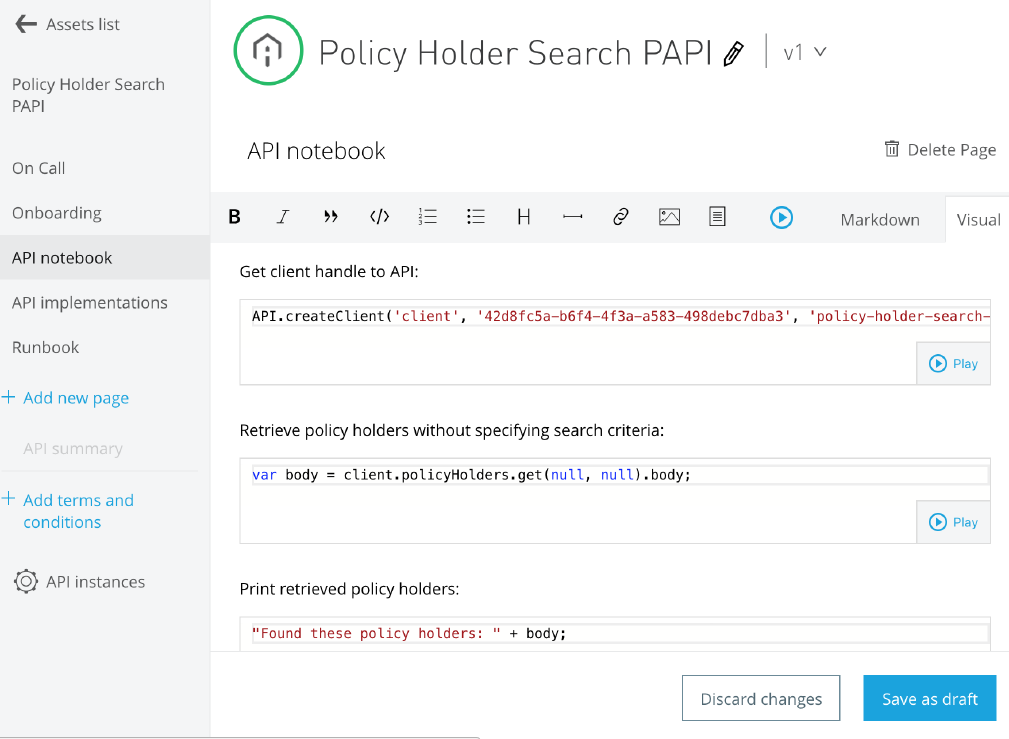

Module 4. Identifying, Reusing and Publishing

APIs

Objectives

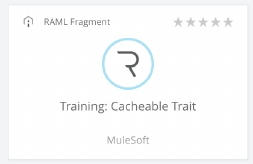

•Map Acme Insurance’s planned strategic initiatives to products and projects

•Identify APIs needed to implement these products

•Assign each API to one of the three tiers of API-led connectivity

•Reason in detail composition and collaboration of APIs

•Reuse APIs wherever possible

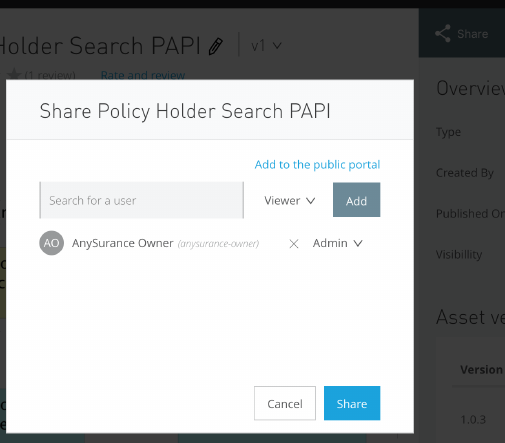

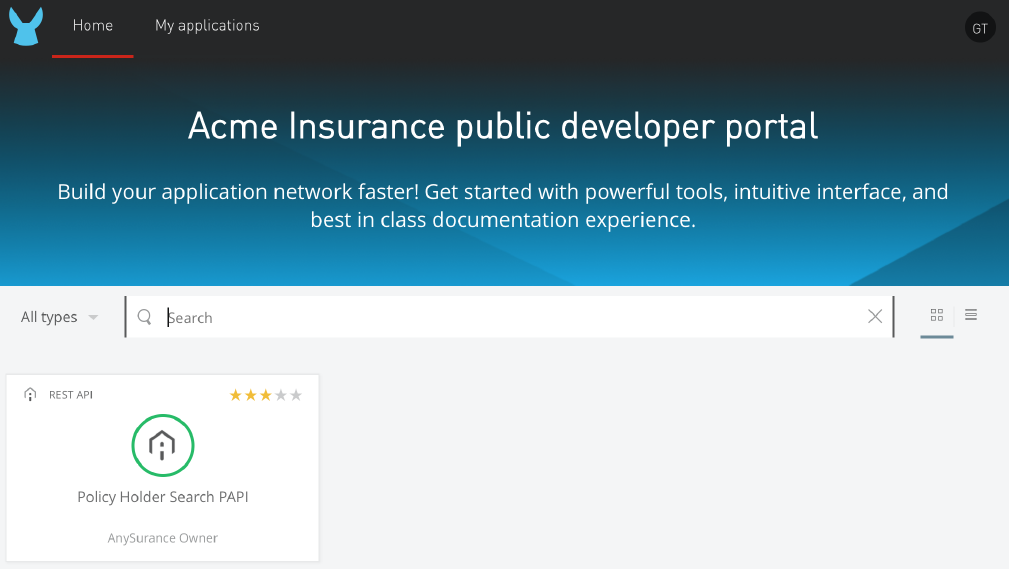

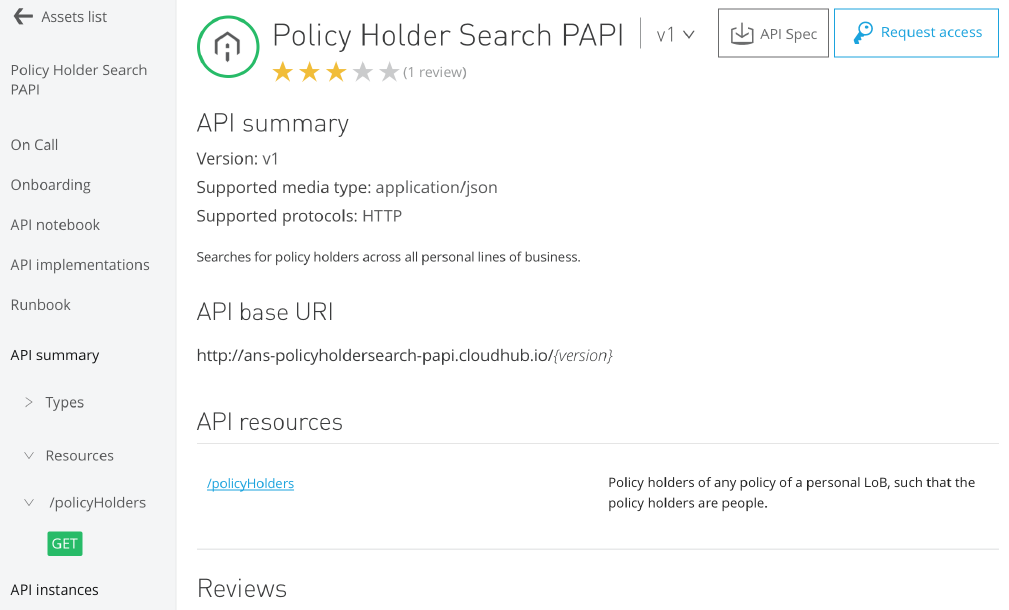

•Publish APIs and related assets for reuse

4.1. Productizing Acme Insurance's strategic initiatives

4.1.1. Translating strategic initiatives into products, projects

and features

Acme Insurance has committed to realize the two most pressing strategic initiatives introduced

ealier (Acme Insurance's motivation to change):

•Open-up to Aggregators for motor insurance

•Provide self-service capabilities to customers

All relevant stakeholders come together under the guidance of the C4E to concretize these

strategic initiatives into two minimally viable products and their defining features:

•The "Aggregator Integration" product

◦with the "Create quote for aggregators" feature as the defining feature

•The "Customer Self-Service App" product

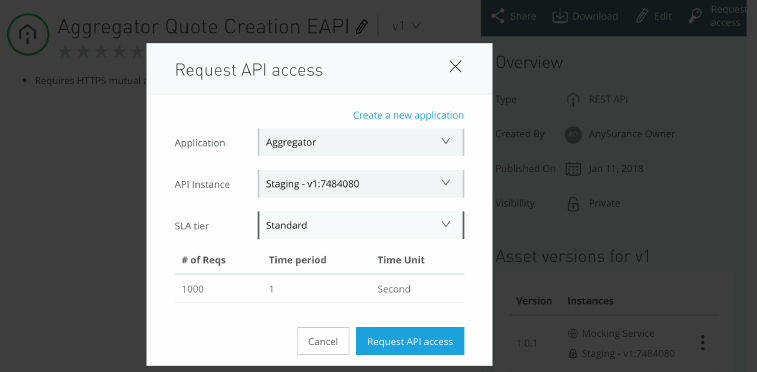

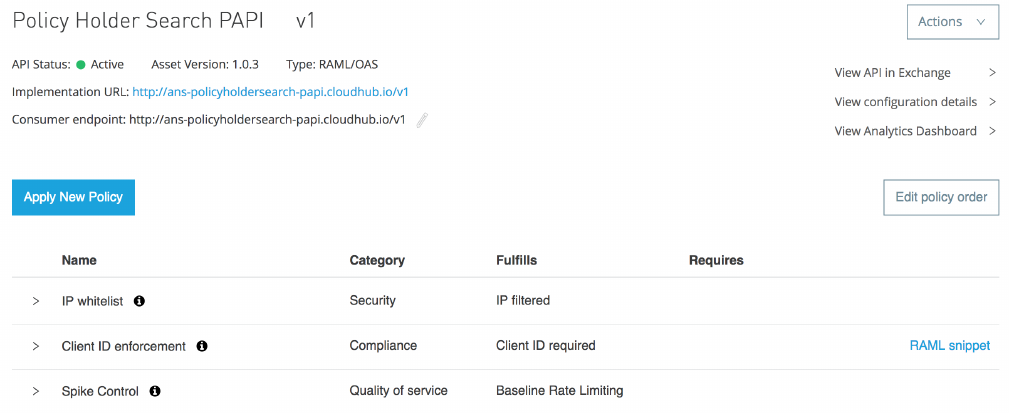

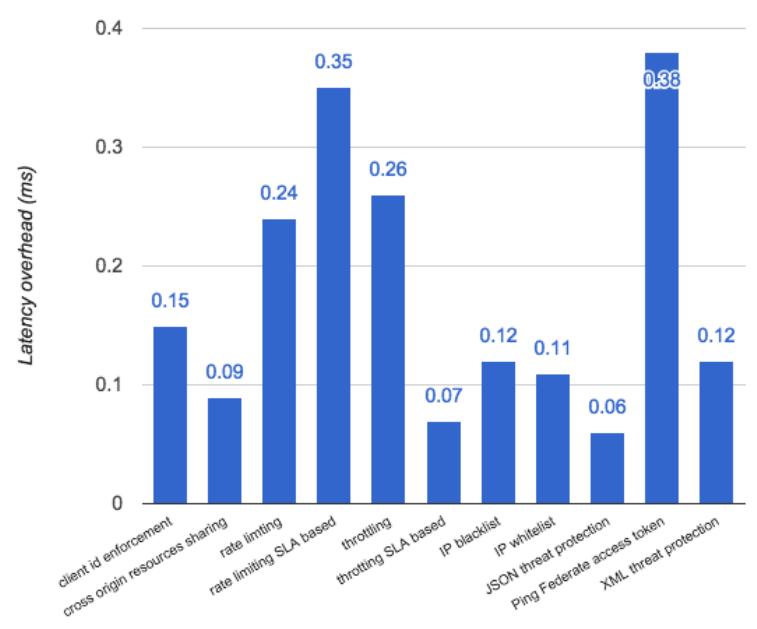

◦with the "Retrieve policy holder summary" feature as one defining feature