AArch64_ISA_interim_v15_00 ARM.Reference Manual

ARM.Reference_Manual_0

ARM.Reference_Manual

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 112 [warning: Documents this large are best viewed by clicking the View PDF Link!]

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 1 of 112

ARMv8 Instruction Set Overview

Architecture Group

Document number: PRD03-GENC-010197 15.0

Date of Issue: 11 November 2011

© Copyright ARM Limited 2009-2011. All rights reserved.

Abstract

This document provides a high-level overview of the ARMv8 instructions sets, being mainly the new A64

instruction set used in AArch64 state but also those new instructions added to the A32 and T32 instruction sets

since ARMv7-A for use in AArch32 state. For A64 this document specifies the preferred architectural assembly

language notation to represent the new instruction set.

Keywords

AArch64, A64, AArch32, A32, T32, ARMv8

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 2 of 112

Proprietary Notice

This specification is protected by copyright and the practice or implementation of the information herein may be

protected by one or more patents or pending applications. No part of this specification may be reproduced in any

form by any means without the express prior written permission of ARM. No license, express or implied, by

estoppel or otherwise to any intellectual property rights is granted by this specification.

Your access to the information in this specification is conditional upon your acceptance that you will not use or

permit others to use the information for the purposes of determining whether implementations of the ARM

architecture infringe any third party patents.

This specification is provided “as is”. ARM makes no representations or warranties, either express or implied,

included but not limited to, warranties of merchantability, fitness for a particular purpose, or non-infringement, that

the content of this specification is suitable for any particular purpose or that any practice or implementation of the

contents of the specification will not infringe any third party patents, copyrights, trade secrets, or other rights.

This specification may include technical inaccuracies or typographical errors.

To the extent not prohibited by law, in no event will ARM be liable for any damages, including without limitation

any direct loss, lost revenue, lost profits or data, special, indirect, consequential, incidental or punitive damages,

however caused and regardless of the theory of liability, arising out of or related to any furnishing, practicing,

modifying or any use of this specification, even if ARM has been advised of the possibility of such damages.

Words and logos marked with ® or TM are registered trademarks or trademarks of ARM Limited, except as

otherwise stated below in this proprietary notice. Other brands and names mentioned herein may be the

trademarks of their respective owners.

Copyright © 2009-2011 ARM Limited

110 Fulbourn Road, Cambridge, England CB1 9NJ

Restricted Rights Legend: Use, duplication or disclosure by the United States Government is subject to the

restrictions set forth in DFARS 252.227-7013 (c)(1)(ii) and FAR 52.227-19.

This document is Non-Confidential but any disclosure by you is subject to you providing notice to and the

acceptance by the recipient of, the conditions set out above.

In this document, where the term ARM is used to refer to the company it means “ARM or any of its subsidiaries as

appropriate”.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 3 of 112

Contents

1 ABOUT THIS DOCUMENT 6

1.1 Change control 6

1.1.1 Current status and anticipated changes 6

1.1.2 Change history 6

1.2 References 6

1.3 Terms and abbreviations 7

2 INTRODUCTION 8

3 A64 OVERVIEW 8

3.1 Distinguishing 32-bit and 64-bit Instructions 10

3.2 Conditional Instructions 10

3.3 Addressing Features 11

3.3.1 Register Indexed Addressing 11

3.3.2 PC-relative Addressing 11

3.4 The Program Counter (PC) 11

3.5 Memory Load-Store 11

3.5.1 Bulk Transfers 11

3.5.2 Exclusive Accesses 12

3.5.3 Load-Acquire, Store-Release 12

3.6 Integer Multiply/Divide 12

3.7 Floating Point 12

3.8 Advanced SIMD 13

4 A64 ASSEMBLY LANGUAGE 14

4.1 Basic Structure 14

4.2 Instruction Mnemonics 14

4.3 Condition Codes 15

4.4 Register Names 17

4.4.1 General purpose (integer) registers 17

4.4.2 FP/SIMD registers 18

4.5 Load/Store Addressing Modes 20

5 A64 INSTRUCTION SET 21

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 4 of 112

5.1 Control Flow 22

5.1.1 Conditional Branch 22

5.1.2 Unconditional Branch (immediate) 22

5.1.3 Unconditional Branch (register) 22

5.2 Memory Access 23

5.2.1 Load-Store Single Register 23

5.2.2 Load-Store Single Register (unscaled offset) 24

5.2.3 Load Single Register (pc-relative, literal load) 25

5.2.4 Load-Store Pair 25

5.2.5 Load-Store Non-temporal Pair 26

5.2.6 Load-Store Unprivileged 27

5.2.7 Load-Store Exclusive 28

5.2.8 Load-Acquire / Store-Release 29

5.2.9 Prefetch Memory 31

5.3 Data Processing (immediate) 32

5.3.1 Arithmetic (immediate) 32

5.3.2 Logical (immediate) 33

5.3.3 Move (wide immediate) 34

5.3.4 Address Generation 35

5.3.5 Bitfield Operations 35

5.3.6 Extract (immediate) 37

5.3.7 Shift (immediate) 37

5.3.8 Sign/Zero Extend 37

5.4 Data Processing (register) 37

5.4.1 Arithmetic (shifted register) 38

5.4.2 Arithmetic (extending register) 39

5.4.3 Logical (shifted register) 40

5.4.4 Variable Shift 42

5.4.5 Bit Operations 43

5.4.6 Conditional Data Processing 43

5.4.7 Conditional Comparison 45

5.5 Integer Multiply / Divide 46

5.5.1 Multiply 46

5.5.2 Divide 47

5.6 Scalar Floating-point 48

5.6.1 Floating-point/SIMD Scalar Memory Access 48

5.6.2 Floating-point Move (register) 51

5.6.3 Floating-point Move (immediate) 51

5.6.4 Floating-point Convert 51

5.6.5 Floating-point Round to Integral 56

5.6.6 Floating-point Arithmetic (1 source) 57

5.6.7 Floating-point Arithmetic (2 source) 57

5.6.8 Floating-point Min/Max 58

5.6.9 Floating-point Multiply-Add 58

5.6.10 Floating-point Comparison 59

5.6.11 Floating-point Conditional Select 59

5.7 Advanced SIMD 60

5.7.1 Overview 60

5.7.2 Advanced SIMD Mnemonics 61

5.7.3 Data Movement 61

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 5 of 112

5.7.4 Vector Arithmetic 62

5.7.5 Scalar Arithmetic 67

5.7.6 Vector Widening/Narrowing Arithmetic 70

5.7.7 Scalar Widening/Narrowing Arithmetic 73

5.7.8 Vector Unary Arithmetic 73

5.7.9 Scalar Unary Arithmetic 75

5.7.10 Vector-by-element Arithmetic 76

5.7.11 Scalar-by-element Arithmetic 78

5.7.12 Vector Permute 78

5.7.13 Vector Immediate 79

5.7.14 Vector Shift (immediate) 80

5.7.15 Scalar Shift (immediate) 82

5.7.16 Vector Floating Point / Integer Convert 84

5.7.17 Scalar Floating Point / Integer Convert 84

5.7.18 Vector Reduce (across lanes) 85

5.7.19 Vector Pairwise Arithmetic 86

5.7.20 Scalar Reduce (pairwise) 86

5.7.21 Vector Table Lookup 87

5.7.22 Vector Load-Store Structure 88

5.7.23 AArch32 Equivalent Advanced SIMD Mnemonics 91

5.7.24 Crypto Extension 99

5.8 System Instructions 100

5.8.1 Exception Generation and Return 100

5.8.2 System Register Access 101

5.8.3 System Management 101

5.8.4 Architectural Hints 104

5.8.5 Barriers and CLREX 104

6 A32 & T32 INSTRUCTION SETS 106

6.1 Partial Deprecation of IT 106

6.2 Load-Acquire / Store-Release 106

6.2.1 Non-Exclusive 106

6.2.2 Exclusive 107

6.3 VFP Scalar Floating-point 108

6.3.1 Floating-point Conditional Select 108

6.3.2 Floating-point minNum/maxNum 108

6.3.3 Floating-point Convert (floating-point to integer) 108

6.3.4 Floating-point Convert (half-precision to/from double-precision) 109

6.3.5 Floating-point Round to Integral 109

6.4 Advanced SIMD Floating-Point 110

6.4.1 Floating-point minNum/maxNum 110

6.4.2 Floating-point Convert 110

6.4.3 Floating-point Round to Integral 110

6.5 Crypto Extension 111

6.6 System Instructions 112

6.6.1 Halting Debug 112

6.6.2 Barriers and Hints 112

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 6 of 112

1 ABOUT THIS DOCUMENT

1.1 Change control

1.1.1 Current status and anticipated changes

This document is a beta release specification and further changes to correct defects and improve clarity should be

expected.

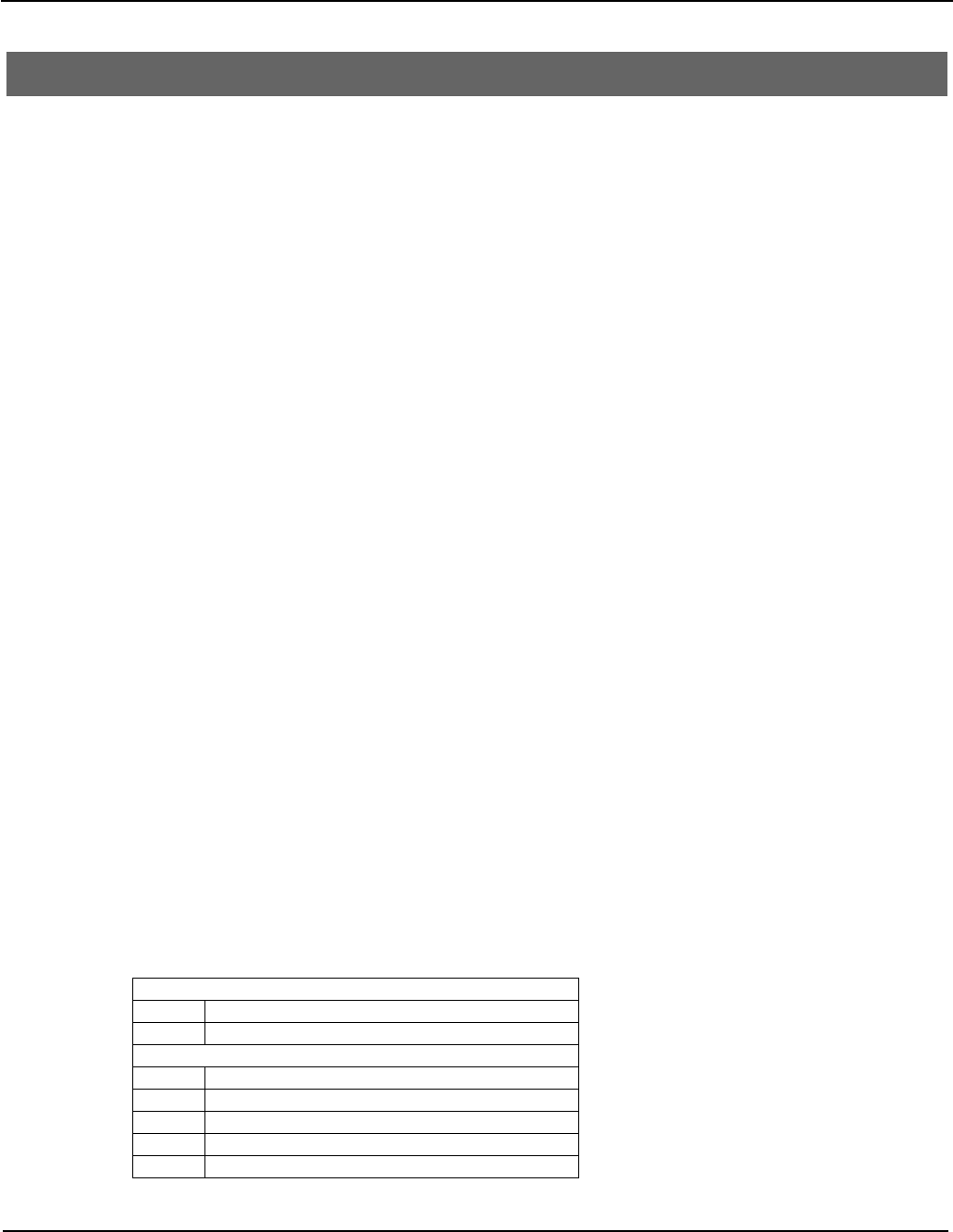

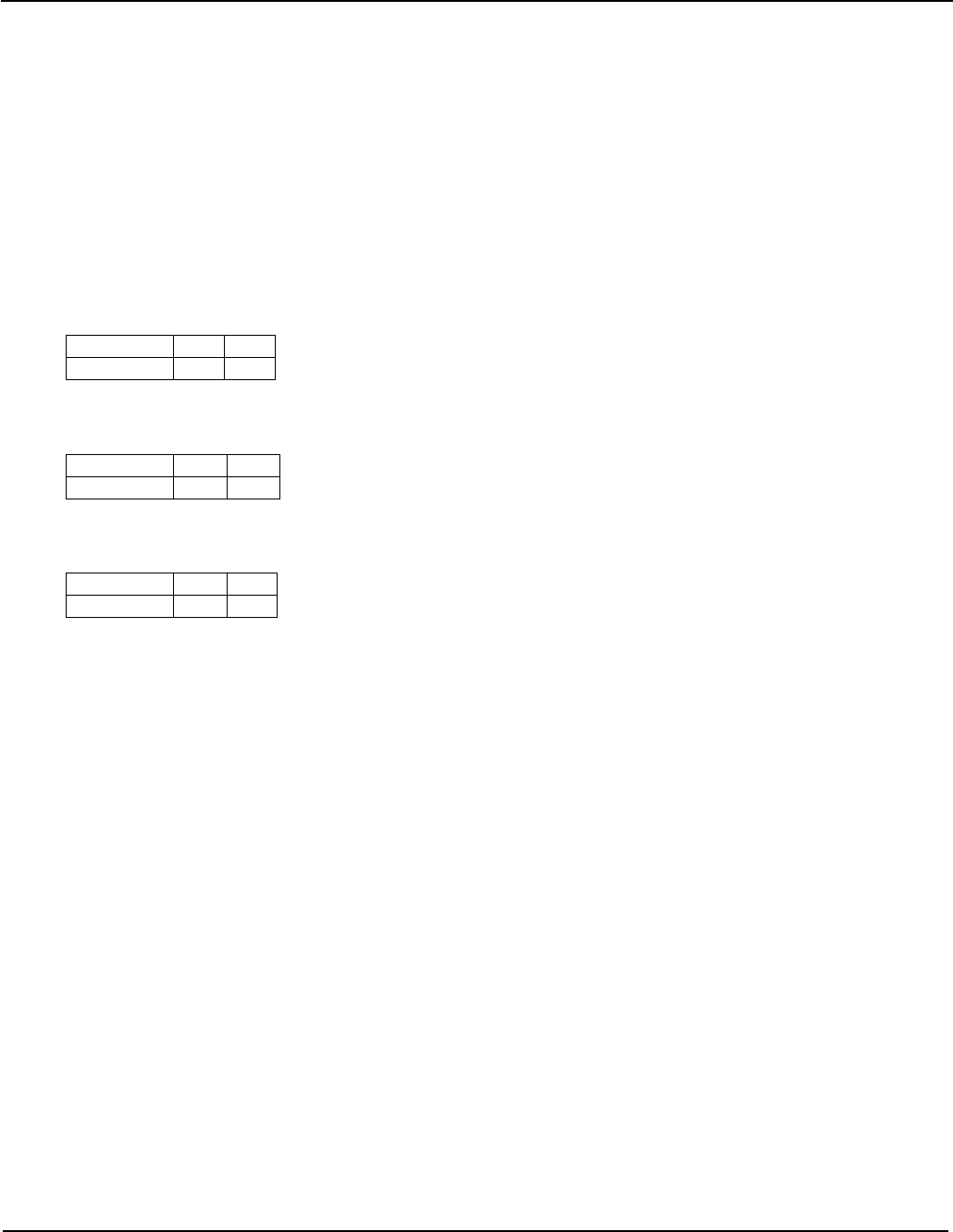

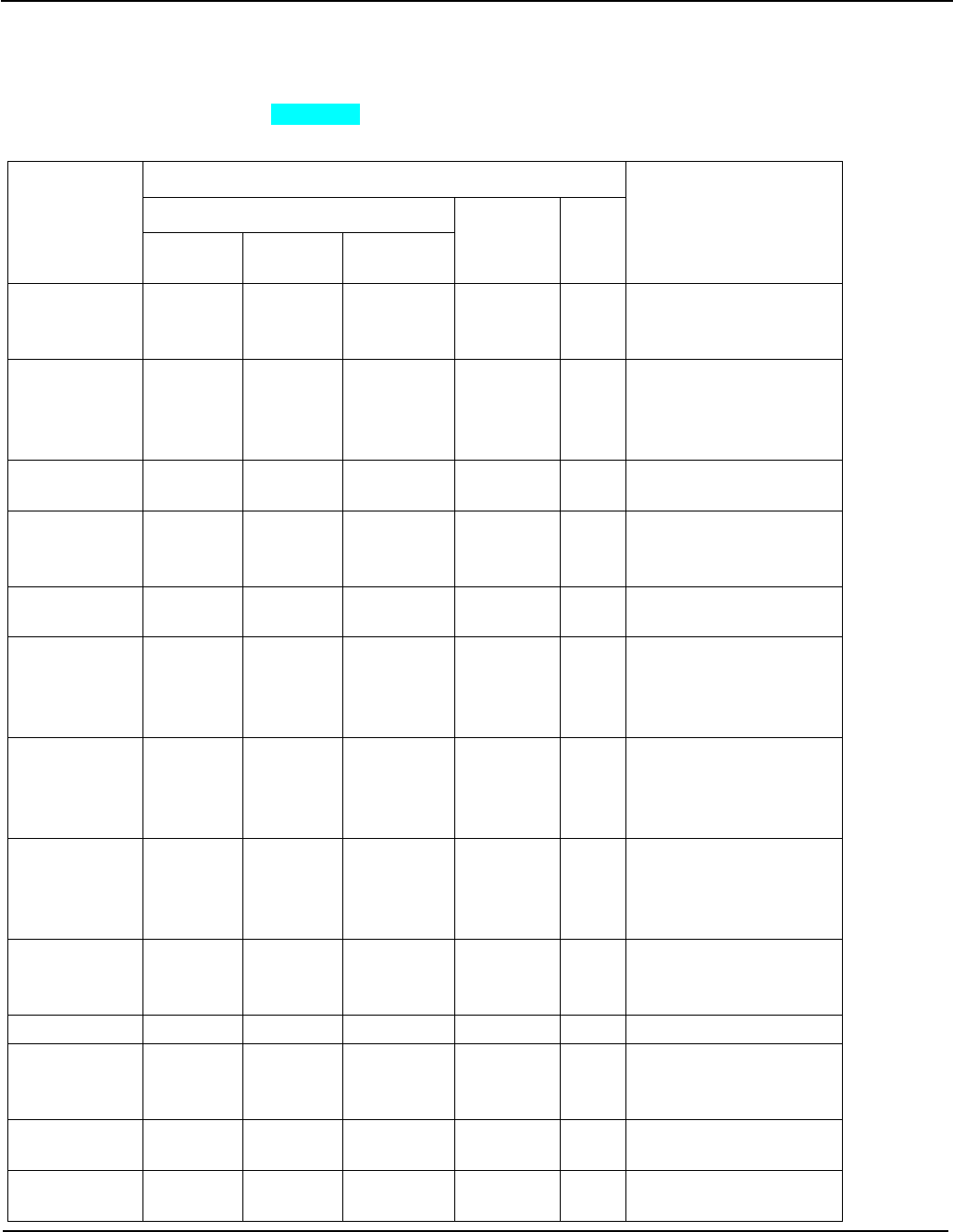

1.1.2 Change history

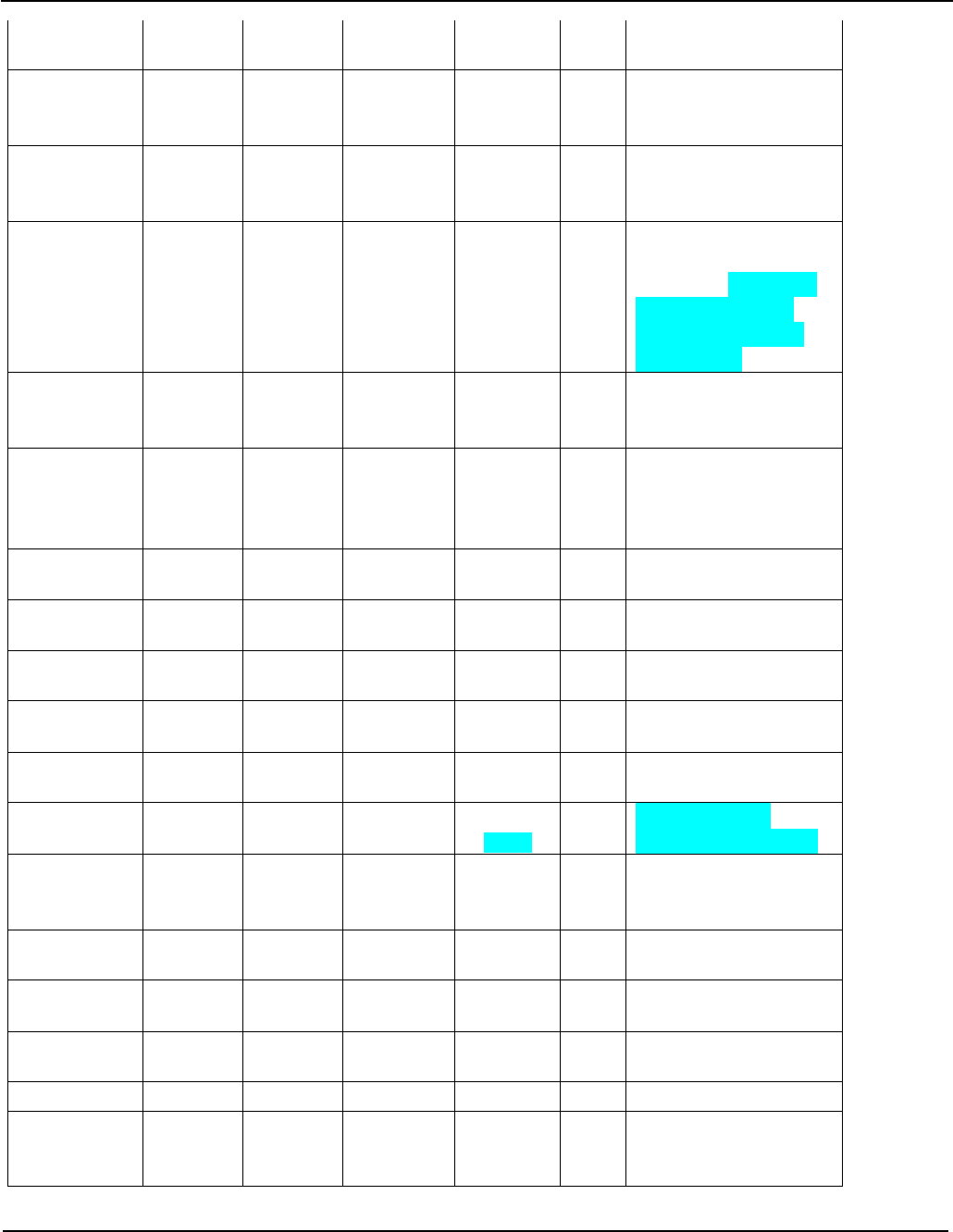

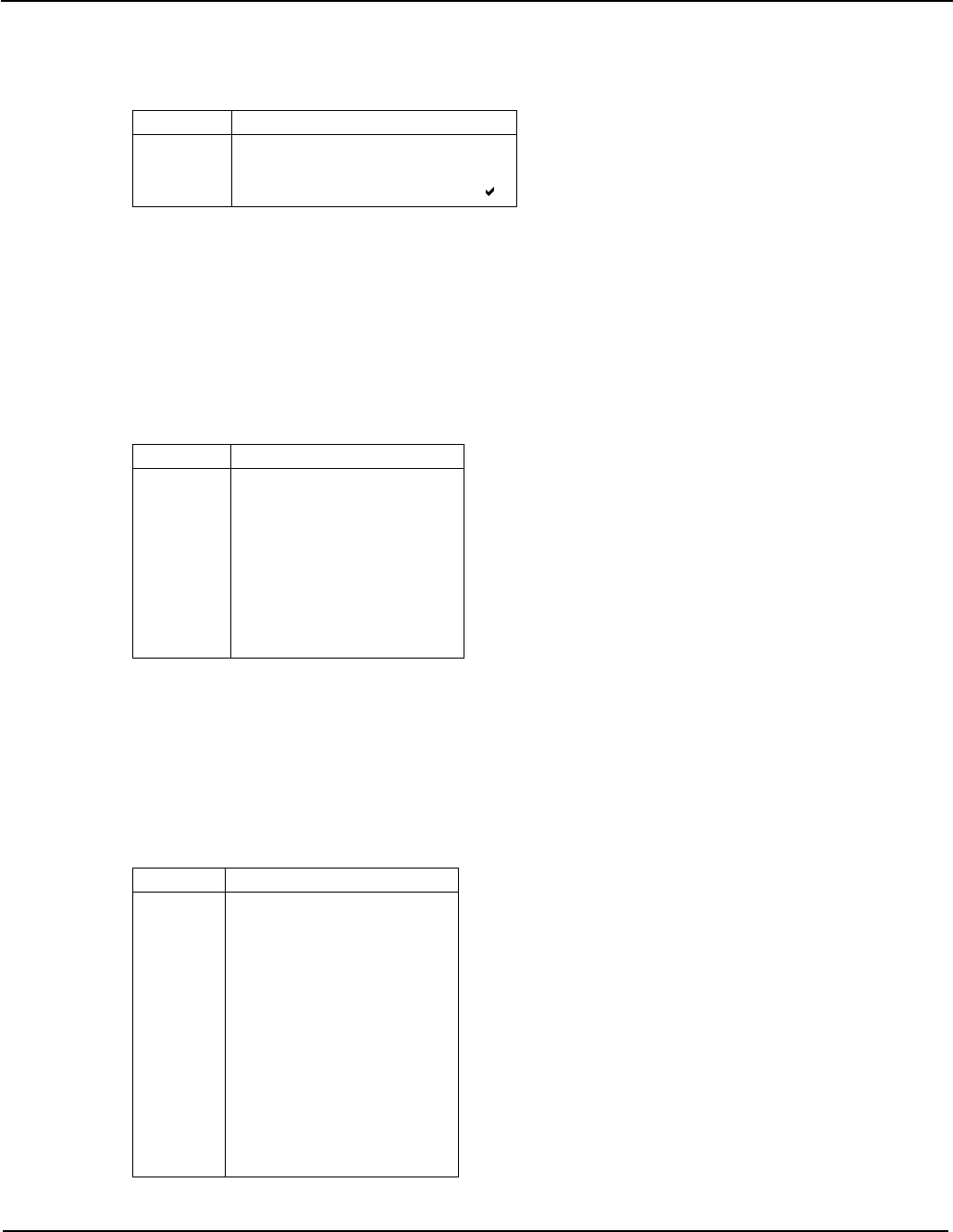

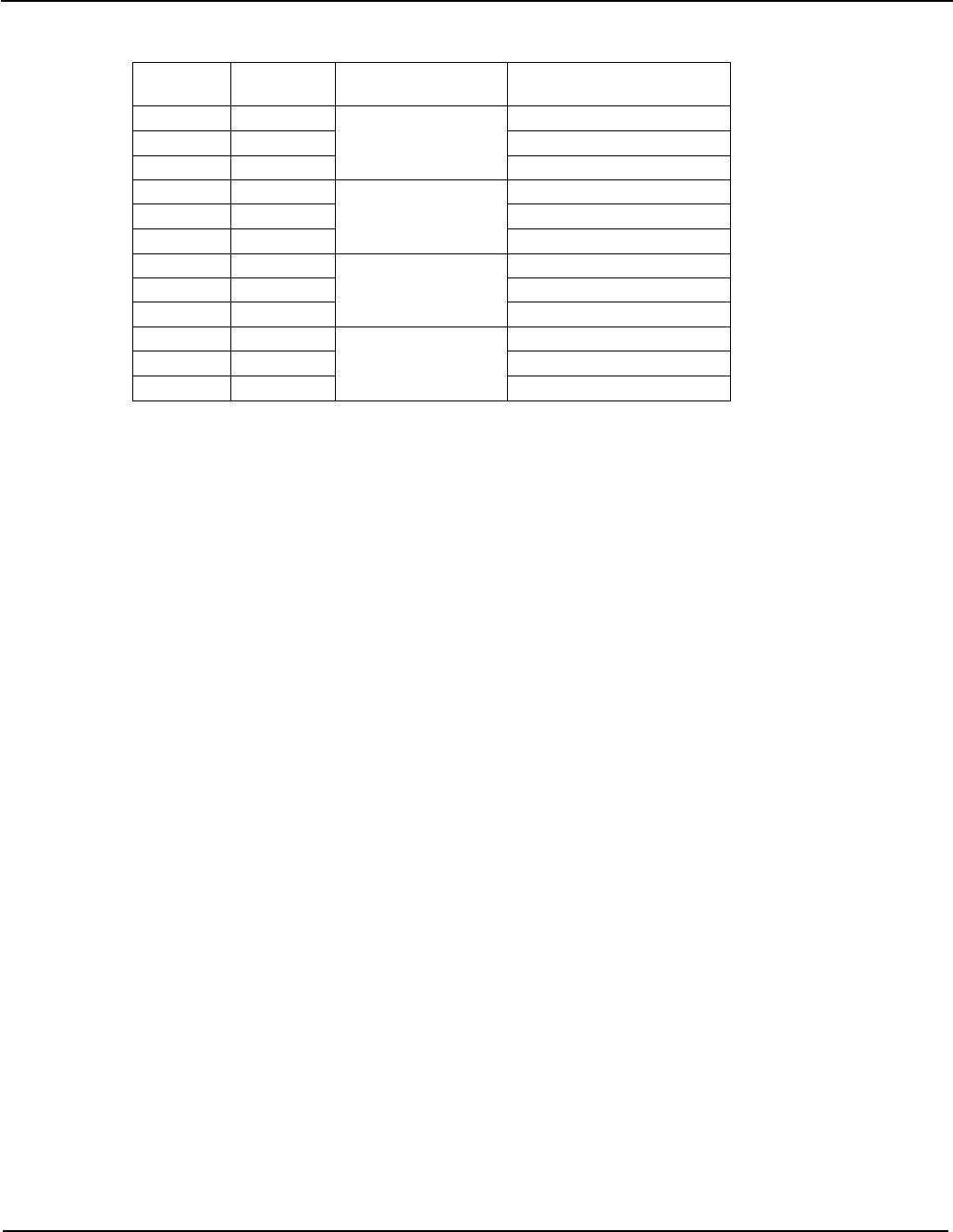

Issue Date By Change

NJS Previous releases tracked in Domino

7.0 17th December 2010 NJS Beta0 release

8.0 25th February 2011 NJS Beta0 update 1

9.0 20th April 2011 NJS Beta1 release

10.0 14th July 2011 NJS Beta2 release

11.0 9th September 2011 NJS Beta2 update 1

12.0 28th September 2011 NJS Beta3 release

13.0 28th October 2011 NJS Beta3 update 1

14.0 28th October 2011 NJS Restructured and incorporated new AArch32 instructions.

15.0 11th November 2011 NJS First non-confidential release. Describe partial deprecation of the

IT instruction. Rename DRET to DRPS and clarify its behavior.

1.2 References

This document refers to the following documents.

Referenc

e Author Document number Title

[v7A] ARM ARM DDI 0406 ARM® Architecture Reference Manual, ARMv7-A and ARMv7-R

edition

[AES] NIST FIPS 197 Announcing the Advanced Encryption Standard (AES)

[SHA] NIST FIPS 180-2 Announcing the Secure Hash Standard (SHA)

[GCM] McGrew and

Viega n/a The Galois/Counter Mode of Operation (GCM)

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 7 of 112

1.3 Terms and abbreviations

This document uses the following terms and abbreviations.

Term Meaning

AArch64 The 64-bit general purpose register width state of the ARMv8 architecture.

AArch32 The 32-bit general purpose register width state of the ARMv8 architecture, broadly

compatible with the ARMv7-A architecture.

Note: The register width state can change only upon a change of exception level.

A64 The new instruction set available when in AArch64 state, and described in this

document.

A32 The instruction set named ARM in the ARMv7 architecture, which uses 32-bit

instructions. The new A32 instructions added by ARMv8 are described in §6.

T32 The instruction set named Thumb in the ARMv7 architecture, which uses 16-bit

and 32-bit instructions. The new T32 instructions added by ARMv8 are described

in §6.

UNALLOCATED Describes an opcode or combination of opcode fields which do not select a valid

instruction at the current privilege level. Executing an UNALLOCATED encoding will

usually result in taking an Undefined Instruction exception.

RESERVED Describes an instruction field value within an otherwise allocated instruction which

should not be used within this specific instruction context, for example a value

which selects an unsupported data type or addressing mode. An instruction

encoding which contains a RESERVED field value is an UNALLOCATED encoding.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 8 of 112

2 INTRODUCTION

This document provides an overview of the ARMv8 instruction sets. Most of the document forms a description of

the new A64 instruction set used when the processor is operating in AArch64 register width state, and defines its

preferred architectural assembly language.

Section 6 below lists the extensions introduced by ARMv8 to the A32 and T32 instruction sets – known in ARMv7

as the ARM and Thumb instruction sets respectively – which are available when the processor is operating in

AArch32 register width state. The A32 and T32 assembly language syntax is unchanged from ARMv7.

In the syntax descriptions below the following conventions are used:

UPPER UPPER-CASE text is fixed, while lower-case text is variable. So register name Xn indicates that the `X’

is required, followed by a variable register number, e.g. X29.

< > Any item bracketed by < and > is a short description of a type of value to be supplied by the user in that

position. A longer description of the item is normally supplied by subsequent text.

{ } Any item bracketed by curly braces { and } is optional. A description of the item and of how its presence

or absence affects the instruction is normally supplied by subsequent text. In some cases curly braces

are actual symbols in the syntax, for example surrounding a register list, and such cases will be called

out in the surrounding text.

[ ] A list of alternative characters may be bracketed by [ and ]. A single one of the characters can be used

in that position and the the subsequent text will describe the meaning of the alternatives. In some cases

the symbols [ and ] are part of the syntax itself, such as addressing modes and vector elements, and

such cases will be called out in the surrounding text.

a | b Alternative words are separated by a vertical bar | and may be surrounded by parentheses to delimit

them, e.g. U(ADD|SUB)W represents UADDW or USUBW.

+/- This indicates an optional + or - sign. If neither is coded, then + is assumed.

3 A64 OVERVIEW

The A64 instruction set provides similar functionality to the A32 and T32 instruction sets in AArch32 or ARMv7.

However just as the addition of 32-bit instructions to the T32 instruction set rationalized some of the ARM ISA

behaviors, the A64 instruction set includes further rationalizations. The highlights of the new instruction set are as

follows:

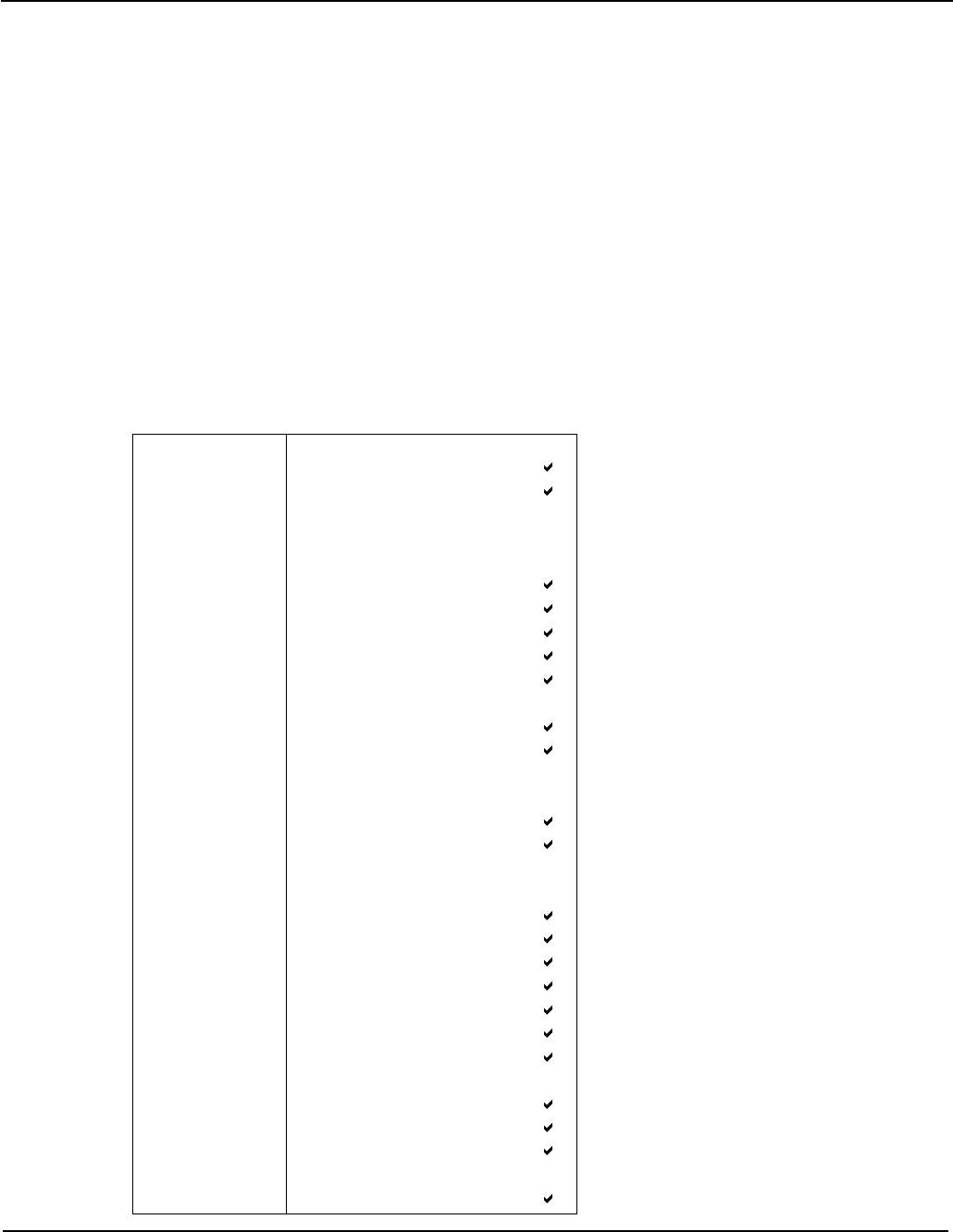

• A clean, fixed length instruction set – instructions are 32 bits wide, register fields are contiguous bit fields

at fixed positions, immediate values mostly occupy contiguous bit fields.

• Access to a larger general-purpose register file with 31 unbanked registers (0-30), with each register

extended to 64 bits. General registers are encoded as 5-bit fields with register number 31 (0b11111)

being a special case representing:

• Zero Register: in most cases register number 31 reads as zero when used as a source register, and

discards the result when used as a destination register.

• Stack Pointer: when used as a load/store base register, and in a small selection of arithmetic

instructions, register number 31 provides access to the current stack pointer.

• The PC is never accessible as a named register. Its use is implicit in certain instructions such as PC-

relative load and address generation. The only instructions which cause a non-sequential change to the

PC are the designated Control Flow instructions (see §5.1) and exceptions. The PC cannot be specified

as the destination of a data processing instruction or load instruction.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 9 of 112

• The procedure call link register (LR) is unbanked, general-purpose register 30; exceptions save the restart

PC to the target exception level’s ELR system register.

• Scalar load/store addressing modes are uniform across all sizes and signedness of scalar integer, floating

point and vector registers.

• A load/store immediate offset may be scaled by the access size, increasing its effective offset range.

• A load/store index register may contain a 64-bit or 32-bit signed/unsigned value, optionally scaled by the

access size.

• Arithmetic instructions for address generation which mirror the load/store addressing modes, see §3.3.

• PC-relative load/store and address generation with a range of ±4GiB is possible using just two instructions

without the need to load an offset from a literal pool.

• PC-relative offsets for literal pool access and most conditional branches are extended to ±1MiB, and for

unconditional branches and calls to ±128MiB.

• There are no multiple register LDM, STM, PUSH and POP instructions, but load-store of a non-contiguous

pair of registers is available.

• Unaligned addresses are permitted for most loads and stores, including paired register accesses, floating

point and SIMD registers, with the exception of exclusive and ordered accesses (see §3.5.2).

• Reduced conditionality. Fewer instructions can set the condition flags. Only conditional branches, and a

handful of data processing instructions read the condition flags. Conditional or predicated execution is not

provided, and there is no equivalent of T32’s IT instruction (see §3.2).

• A shift option for the final register operand of data processing instructions is available:

o Immediate shifts only (as in T32).

o No RRX shift, and no ROR shift for ADD/SUB.

o The ADD/SUB/CMP instructions can first sign or zero-extend a byte, halfword or word in the final

register operand, followed by an optional left shift of 1 to 4 bits.

• Immediate generation replaces A32’s rotated 8-bit immediate with operation-specific encodings:

o Arithmetic instructions have a simple 12-bit immediate, with an optional left shift by 12.

o Logical instructions provide sophisticated replicating bit mask generation.

o Other immediates may be constructed inline in 16-bit “chunks”, extending upon the MOVW and

MOVT instructions of AArch32.

• Floating point support is similar to AArch32 VFP but with some extensions, as described in §3.6.

• Floating point and Advanced SIMD processing share a register file, in a similar manner to AArch32, but

extended to thirty-two 128-bit registers. Smaller registers are no longer packed into larger registers, but

are mapped one-to-one to the low-order bits of the 128-bit register, as described in §4.4.2.

• There are no SIMD or saturating arithmetic instructions which operate on the general purpose registers,

such operations being available only as part of the Advanced SIMD processing, described in §5.7.

• There is no access to CPSR as a single register, but new system instructions provide the ability to

atomically modify individual processor state fields, see §5.8.2.

• The concept of a “coprocessor” is removed from the architecture. A set of system instructions described in

§5.8 provides:

o System register access

o Cache/TLB management

o VA PA translation

o Barriers and CLREX

o Architectural hints (WFI, etc)

o Debug

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 10 of 112

3.1 Distinguishing 32-bit and 64-bit Instructions

Most integer instructions in the A64 instruction set have two forms, which operate on either 32-bit or 64-bit values

within the 64-bit general-purpose register file. Where a 32-bit instruction form is selected, the following holds true:

• The upper 32 bits of the source registers are ignored;

• The upper 32 bits of the destination register are set to ZERO;

• Right shifts/rotates inject at bit 31, instead of bit 63;

• The condition flags, where set by the instruction, are computed from the lower 32 bits.

This distinction applies even when the result(s) of a 32-bit instruction form would be indistinguishable from the

lower 32 bits computed by the equivalent 64-bit instruction form. For example a 32-bit bitwise ORR could be

performed using a 64-bit ORR, and simply ignoring the top 32 bits of the result. But the A64 instruction set includes

separate 32 and 64-bit forms of the ORR instruction.

Rationale: The C/C++ LP64 and LLP64 data models – expected to be the most commonly used on AArch64 –

both define the frequently used int, short and char types to be 32 bits or less. By maintaining this semantic

information in the instruction set, implementations can exploit this information to avoid expending energy or cycles

to compute, forward and store the unused upper 32 bits of such data types. Implementations are free to exploit

this freedom in whatever way they choose to save energy.

As well as distinct sign/zero-extend instructions, the A64 instruction set also provides the ability to extend and shift

the final source register of an ADD, SUB or CMP instruction and the index register of a load/store instruction. This

allows for an efficient implementation of array index calculations involving a 64-bit array pointer and 32-bit array

index.

The assembly language notation is designed to allow the identification of registers holding 32-bit values as distinct

from those holding 64-bit values. As well as aiding readability, tools may be able to use this to perform limited type

checking, to identify programming errors resulting from the change in register size.

3.2 Conditional Instructions

The A64 instruction set does not include the concept of predicated or conditional execution. Benchmarking shows

that modern branch predictors work well enough that predicated execution of instructions does not offer sufficient

benefit to justify its significant use of opcode space, and its implementation cost in advanced implementations.

A very small set of “conditional data processing” instructions are provided. These instructions are unconditionally

executed but use the condition flags as an extra input to the instruction. This set has been shown to be beneficial

in situations where conditional branches predict poorly, or are otherwise inefficient.

The conditional instruction types are:

• Conditional branch: the traditional ARM conditional branch, together with compare and branch if register

zero/non-zero, and test single bit in register and branch if zero/non-zero – all with increased displacement.

• Add/subtract with carry: the traditional ARM instructions, for multi-precision arithmetic, checksums, etc.

• Conditional select with increment, negate or invert: conditionally select between one source register and a

second incremented/negated/inverted/unmodified source register. Benchmarking reveals these to be the

highest frequency uses of single conditional instructions, e.g. for counting, absolute value, etc. These

instructions also implement:

o Conditional select (move): sets the destination to one of two source registers, selected by the

condition flags. Short conditional sequences can be replaced by unconditional instructions

followed by a conditional select.

o Conditional set: conditionally select between 0 and 1 or -1, for example to materialize the

condition flags as a Boolean value or mask in a general register.

• Conditional compare: sets the condition flags to the result of a comparison if the original condition was

true, else to an immediate value. Permits the flattening of nested conditional expressions without using

conditional branches or performing Boolean arithmetic within general registers.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 11 of 112

3.3 Addressing Features

The prime motivation for a 64-bit architecture is access to a larger virtual address space. The AArch64 memory

translation system supports a 49-bit virtual address (48 bits per translation table). Virtual addresses are sign-

extended from 49 bits, and stored within a 64-bit pointer. Optionally, under control of a system register, the most

significant 8 bits of a 64-bit pointer may hold a “tag” which will be ignored when used as a load/store address or

the target of an indirect branch.

3.3.1 Register Indexed Addressing

The A64 instruction set extends on 32-bit T32 addressing modes, allowing a 64-bit index register to be added to

the 64-bit base register, with optional scaling of the index by the access size. Additionally it provides for sign or

zero-extension of a 32-bit value within an index register, again with optional scaling.

These register index addressing modes provide a useful performance gain if they can be performed within a single

cycle, and it is believed that at least some implementations will be able to do this. However, based on

implementation experience with AArch32, it is expected that other implementations will need an additional cycle to

execute such addressing modes.

Rationale: The architects intend that implementations should be free to fine-tune the performance trade-offs

within each implementation, and note that providing an instruction which in some implementations takes two

cycles, is preferable to requiring the dynamic grouping of two independent instructions in an implementation that

can perform this address arithmetic in a single cycle.

3.3.2 PC-relative Addressing

There is improved support for position-independent code and data addressing:

• PC-relative literal loads have an offset range of ±1MiB. This permits fewer literal pools, and more sharing

of literal data between functions – reducing I-cache and TLB pollution.

• Most conditional branches have a range of ±1MiB, expected to be sufficient for the majority of conditional

branches which take place within a single function.

• Unconditional branches, including branch and link, have a range of ±128MiB. Expected to be sufficient to

span the static code segment of most executable load modules and shared objects, without needing

linker-inserted trampolines or “veneers”.

• PC-relative load/store and address generation with a range of ±4GiB may be performed inline using only

two instructions, i.e. without the need to load an offset from a literal pool.

3.4 The Program Counter (PC)

The current Program Counter (PC) cannot be referred to by number as if part of the general register file and

therefore cannot be used as the source or destination of arithmetic instructions, or as the base, index or transfer

register of load/store instructions. The only instructions which read the PC are those whose function is to compute

a PC-relative address (ADR, ADRP, literal load, and direct branches), and the branch-and-link instructions which

store it in the link register (BL and BLR). The only way to modify the Program Counter is using explicit control flow

instructions: conditional branch, unconditional branch, exception generation and exception return instructions.

Where the PC is read by an instruction to compute a PC-relative address, then its value is the address of the

instruction, i.e. unlike A32 and T32 there is no implied offset of 4 or 8 bytes.

3.5 Memory Load-Store

3.5.1 Bulk Transfers

The LDM, STM, PUSH and POP instructions do not exist in A64, however bulk transfers can be constructed

using the LDP and STP instructions which load and store a pair of independent registers from consecutive

memory locations, and which support unaligned addresses when accessing normal memory. The LDNP and

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 12 of 112

STNP instructions additionally provide a “streaming” or ”non-temporal” hint that the data does not need to be

retained in caches. The PRFM (prefetch memory) instructions also include hints for “streaming” or “non-temporal”

accesses, and allow targeting of a prefetch to a specific cache level.

3.5.2 Exclusive Accesses

Exclusive load-store of a byte, halfword, word and doubleword. Exclusive access to a pair of doublewords permit

atomic updates of a pair of pointers, for example circular list inserts. All exclusive accesses must be naturally

aligned, and exclusive pair access must be aligned to twice the data size (i.e. 16 bytes for a 64-bit pair).

3.5.3 Load-Acquire, Store-Release

Explicitly synchronising load and store instructions implement the release-consistency (RCsc) memory model,

reducing the need for explicit memory barriers, and providing a good match to emerging language standards for

shared memory. The instructions exist in both exclusive and non-exclusive forms, and require natural address

alignment. See §5.2.8 for more details.

3.6 Integer Multiply/Divide

Including 32 and 64-bit multiply, with accumulation:

32 ± (32 32) 32

64 ± (64 64) 64

± (32 32) 32

± (64 64) 64

Widening multiply (signed and unsigned), with accumulation:

64 ± (32 32) 64

± (32 32) 64

(64 64) hi64 <127:64>

Multiply instructions write a single register. A 64 64 to 128-bit multiply requires a sequence of two instructions to

generate a pair of 64-bit result registers:

+ (64 64) <63:0>

(64 64) <127:64>

Signed and unsigned 32- and 64-bit divide are also provided. A remainder instruction is not provided, but a

remainder may be computed easily from the dividend, divisor and quotient using an MSUB instruction. There is no

hardware check for “divide by zero”, but this check can be performed in the shadow of a long latency division. A

divide by zero writes zero to the destination register.

3.7 Floating Point

AArch64 mandates hardware floating point wherever floating point arithmetic is required – there is no “soft-float”

variant of the AArch64 Procedure Calling Standard (PCS).

Floating point functionality is similar to AArch32 VFP, with the following changes:

• The deprecated “small vector” feature of VFP is removed.

• There are 32 S registers and 32 D registers. The S registers are not packed into D registers, but occupy

the low 32 bits of the corresponding D register. For example S31=D31<31:0>, not D15<63:32>.

• Load/store addressing modes identical to integer load/stores.

• Load/store of a pair of floating point registers.

• Floating point FCSEL and FCCMP equivalent to the integer CSEL and CCMP.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 13 of 112

• Floating point FCMP and FCCMP instructions set the integer condition flags directly, and do not modify the

condition flags in the FPSR.

• All floating-point multiply-add and multiply-subtract instructions are “fused”.

• Convert between 64-bit integer and floating point.

• Convert FP to integer with explicit rounding direction (towards zero, towards +Inf, towards -Inf, to nearest

with ties to even, and to nearest with ties away from zero).

• Round FP to nearest integral FP with explicit rounding direction (as above).

• Direct conversion between half-precision and double-precision.

• FMINNM & FMAXNM implementing the IEEE754-2008 minNum() and maxNum() operations, returning the

numerical value if one of the operands is a quiet NaN.

3.8 Advanced SIMD

See §5.7 below for a detailed description.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 14 of 112

4 A64 ASSEMBLY LANGUAGE

4.1 Basic Structure

The letter W is shorthand for a 32-bit word, and X for a 64-bit extended word. The letter X (extended) is used rather

than D (double), since D conflicts with its use for floating point and SIMD “double-precision” registers and the T32

load/store “double-register” instructions (e.g. LDRD).

An A64 assembler will recognise both upper and lower-case variants of instruction mnemonics and register

names, but not mixed case. An A64 disassembler may output either upper or lower-case mnemonics and register

names. The case of program and data labels is significant.

The fundamental statement format and operand order follows that used by AArch32 UAL assemblers and

disassemblers, i.e. a single statement per source line, consisting of one or more optional program labels, followed

by an instruction mnemonic, then a destination register and one or more source operands separated by commas.

{label:*} {opcode {dest{, source1{, source2{, source3}}}}}

This dest/source ordering is reversed for store instructions, in common with AArch32 UAL.

The A64 assembly language does not require the ‘#’ symbol to introduce immediate values, though an assembler

must allow it. An A64 disassembler shall always output a ‘#’ before an immediate value for readability.

Where a user-defined symbol or label is identical to a pre-defined register name (e.g. “X0”) then if it is used in a

context where its interpretation is ambiguous – for example in an operand position that would accept either a

register name or an immediate expression – then an assembler must interpret it as the register name. A symbol

may be disambiguated by using it within an expression context, i.e. by placing it within parentheses and/or

prefixing it with an explicit ‘#’ symbol.

In the examples below the sequence “//” is used as a comment leader, though A64 assemblers are also

expected to to support their legacy ARM comment syntax.

4.2 Instruction Mnemonics

An A64 instruction form can be identified by the following combination of attributes:

• The operation name (e.g. ADD) which indicates the instruction semantics.

• The operand container, usually the register type. An instruction writes to the whole container, but if it is not

the largest in its class, then the remainder of the largest container in the class is set to ZERO.

• The operand data subtype, where some operand(s) are a different size from the primary container.

• The final source operand type, which may be a register or an immediate value.

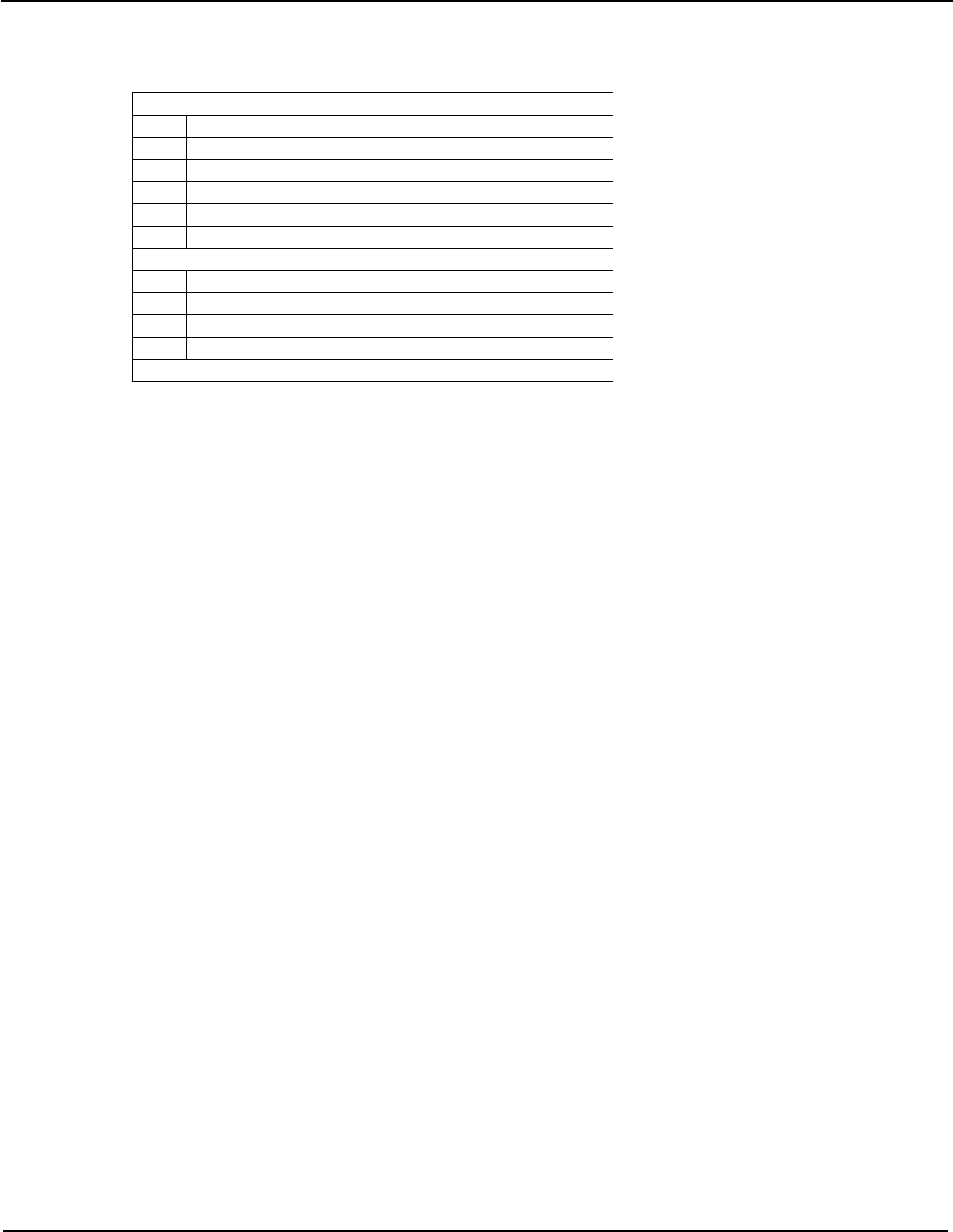

The container is one of:

Integer Class

W 32-bit integer

X 64-bit integer

SIMD Scalar & Floating Point Class

B 8-bit scalar

H 16-bit scalar & half-precision float

S 32-bit scalar & single-precision float

D 64-bit scalar & double-precision float

Q 128-bit scalar

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 15 of 112

The subtype is one of:

Load-Store / Sign-Zero Extend

B byte

SB signed byte

H halfword

SH signed halfword

W word

SW signed word

Register Width Changes

H High (dst gets top half)

N Narrow (dst < src)

L Long (dst > src)

W Wide (dst == src1, src1 > src2)

etc

These attributes are combined in the assembly language notation to identify the specific instruction form. In order

to retain a close look and feel to the existing ARM assembly language, the following format has been adopted:

<name>{<subtype>} <container>

In other words the operation name and subtype are described by the instruction mnemonic, and the container size

by the operand name(s). Where subtype is omitted, it is inherited from container.

In this way an assembler programmer can write an instruction without having to remember a multitude of new

mnemonics; and the reader of a disassembly listing can straightforwardly read an instruction and see at a glance

the type and size of each operand.

The implication of this is that the A64 assembly language overloads instruction mnemonics, and distinguishes

between the different forms of an instruction based on the operand register names. For example the ADD

instructions below all have different opcodes, but the programmer only has to remember one mnemonic and the

assembler automatically chooses the correct opcode based on the operands – with the disassembler doing the

reverse.

ADD W0, W1, W2 // add 32-bit register

ADD X0, X1, X2 // add 64-bit register

ADD X0, X1, W2, SXTW // add 64-bit extending register

ADD X0, X1, #42 // add 64-bit immediate

4.3 Condition Codes

In AArch32 assembly language conditionally executed instructions are represented by directly appending the

condition to the mnemonic, without a delimiter. This leads to some ambiguity which can make assembler code

difficult to parse: for example ADCS, BICS, LSLS and TEQ look at first glance like conditional instructions.

The A64 ISA has far fewer instructions which set or test condition codes. Those that do will be identified as

follows:

1. Instructions which set the condition flags are notionally different instructions, and will continue to be

identified by appending an ‘S’ to the base mnemonic, e.g. ADDS.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 16 of 112

2. Instructions which are truly conditionally executed (i.e. when the condition is false they have no effect on

the architectural state, aside from advancing the program counter) have the condition appended to the

instruction with a '.' delimiter. For example B.EQ.

3. If there is more than one instruction extension, then the conditional extension is always last.

4. Where a conditional instruction has qualifiers, the qualifiers follow the condition.

5. Instructions which are unconditionally executed, but use the condition flags as a source operand, will

specify the condition to test in their final operand position, e.g. CSEL Wd,Wm,Wn,NE

To aid portability an A64 assembler may also provide the old UAL conditional mnemonics, so long as they have

direct equivalents in the A64 ISA. However, the UAL mnemonics will not be generated by an A64 disassembler –

their use is deprecated in 64-bit assembler code, and may cause a warning or error if backward compatibility is

not explicitly requested by the programmer.

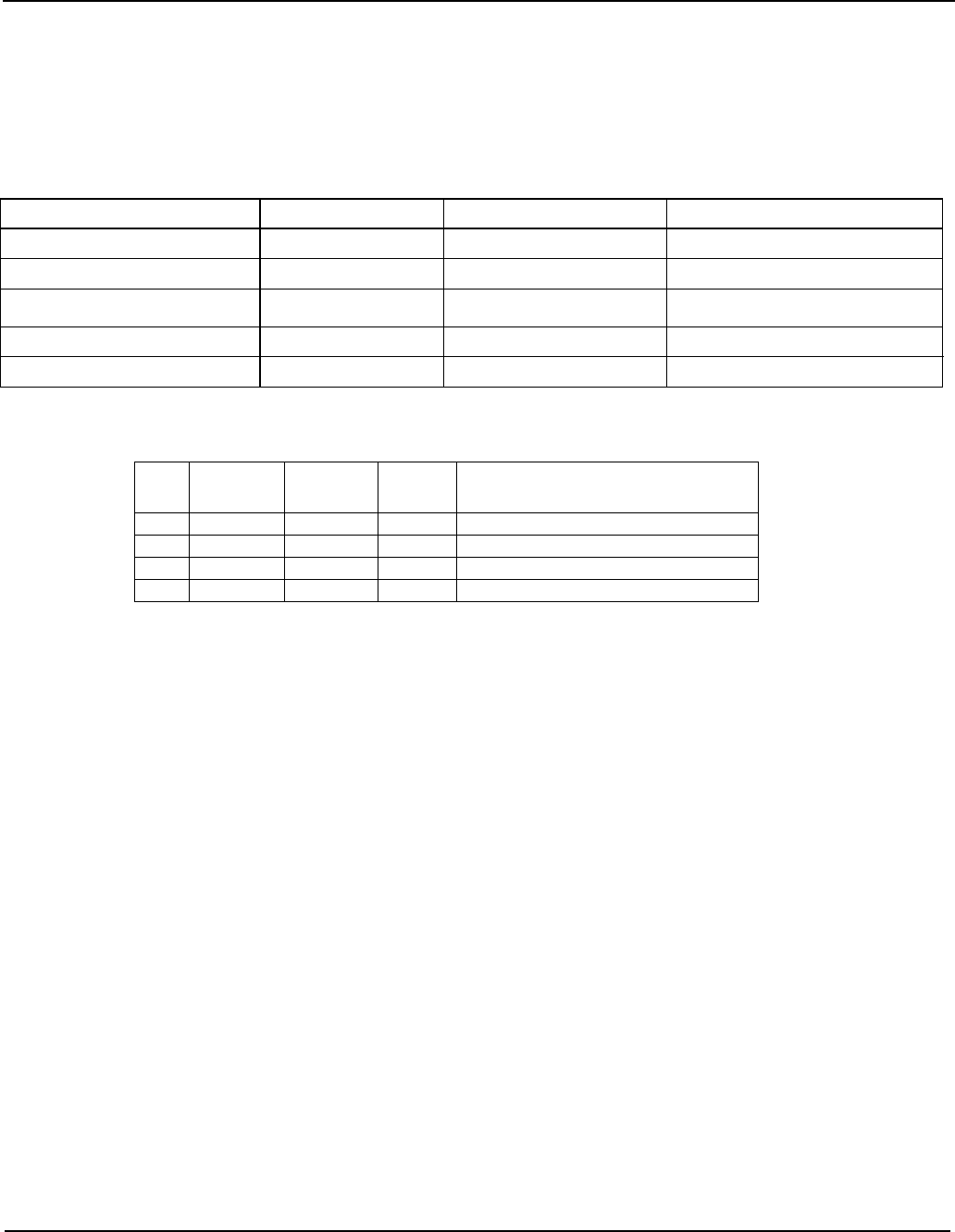

The full list of condition codes is as follows:

Encoding Name

(&

alias) Meaning (integer) Meaning (floating point) Flags

0000 EQ Equal Equal Z==1

0001 NE Not equal Not equal, or unordered Z==0

0010 HS

(CS)

Unsigned higher or same

(Carry set) Greater than, equal, or unordered C==1

0011 LO

(CC)

Unsigned lower

(Carry clear) Less than C==0

0100 MI Minus (negative) Less than N==1

0101 PL Plus (positive or zero) Greater than, equal, or unordered N==0

0110 VS Overflow set Unordered V==1

0111 VC Overflow clear Ordered V==0

1000 HI Unsigned higher Greater than, or unordered C==1 && Z==0

1001 LS Unsigned lower or same Less than or equal !(C==1 && Z==0)

1010 GE Signed greater than or

equal Greater than or equal N==V

1011 LT Signed less than Less than or unordered N!=V

1100 GT Signed greater than Greater than Z==0 && N==V

1101 LE Signed less than or equal Less than, equal, or unordered !(Z==0 && N==V)

1110 AL

1111 NV† Always Always Any

†The condition code NV exists only to provide a valid disassembly of the ‘1111b’ encoding, and otherwise behaves

identically to AL.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 17 of 112

4.4 Register Names

4.4.1 General purpose (integer) registers

The thirty one general purpose registers in the main integer register bank are named R0 to R30, with special

register number 31 having different names, depending on the context in which it is used. However, when the

registers are used in a specific instruction form, they must be further qualified to indicate the operand data size (32

or 64 bits) – and hence the instruction’s data size.

The qualified names for the general purpose registers are as follows, where ‘n’ is the register number 0 to 30:

Size (bits) 32b 64b

Name Wn Xn

Where register number 31 represents read zero or discard result (aka the “zero register”):

Size (bits) 32b 64b

Name WZR XZR

Where register number 31 represents the stack pointer:

Size (bits) 32b 64b

Name WSP SP

In more detail:

• The names Xn and Wn refer to the same architectural register.

• There is no register named W31 or X31.

• For instruction operands where register 31 in interpreted as the 64-bit stack pointer, it is represented by

the name SP. For operands which do not interpret register 31 as the 64-bit stack pointer this name shall

cause an assembler error.

• The name WSP represents register 31 as the stack pointer in a 32-bit context. It is provided only to allow a

valid disassembly, and should not be seen in correctly behaving 64-bit code.

• For instruction operands which interpret register 31 as the zero register, it is represented by the name XZR

in 64-bit contexts, and WZR in 32-bit contexts. In operand positions which do not interpret register 31 as

the zero register these names shall cause an assembler error.

• Where a mnemonic is overloaded (i.e. can generate different instruction encodings depending on the data

size), then an assembler shall determine the precise form of the instruction from the size of the first

register operand. Usually the other operand registers should match the size of the first operand, but in

some cases a register may have a different size (e.g. an address base register is always 64 bits), and a

source register may be smaller than the destination if it contains a word, halfword or byte that is being

widened by the instruction to 64 bits.

• The architecture does not define a special name for register 30 that reflects its special role as the link

register on procedure calls. Such software names may be defined as part of the Procedure Calling

Standard.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 18 of 112

4.4.2 FP/SIMD registers

The thirty two registers in the FP/SIMD register bank named V0 to V31 are used to hold floating point

operands for the scalar floating point instructions, and both scalar and vector operands for the Advanced

SIMD instructions. As with the general purpose integer registers, when they are used in a specific instruction

form the names must be further qualified to indicate the data shape (i.e. the data element size and number of

elements or lanes) held within them.

Note however that the data type, i.e. the interpretation of the bits within each register or vector element –

integer (signed, unsigned or irrelevant), floating point, polynomial or cryptographic hash – is not described by

the register name, but by the instruction mnemonics which operate on them. For more details see the

Advanced SIMD description in §5.7.

4.4.2.1 SIMD scalar register

In Advanced SIMD and floating point instructions which operate on scalar data the FP/SIMD registers behave

similarly to the main general-purpose integer registers, i.e. only the lower bits are accessed, with the unused

high bits ignored on a read and set to zero on a write. The qualified names for scalar FP/SIMD names indicate

the number of significant bits as follows, where ‘n’ is a register number 0 to 31:

Size (bits) 8b 16b

32b 64b 128b

Name Bn Hn Sn Dn Qn

4.4.2.2 SIMD vector register

When a register holds multiple data elements on which arithmetic will be performed in a parallel, SIMD

fashion, then a qualifier describes the vector shape: i.e. the element size, and the number of elements or

“lanes”. Where “bits lanes” does not equal 128, the upper 64 bits of the register are ignored when read and

set to zero on a write.

The fully qualified SIMD vector register names are as follows, where ‘n’ is the register number 0 to 31:

Shape (bits lanes) 8b 8 8b 16 16b 4

16b 8

32b 2

32b 4 64b 1 64b 2

Name Vn.8B Vn.16B Vn.4H Vn.8H Vn.2S Vn.4S Vn.1D Vn.2D

4.4.2.3 SIMD vector element

Where a single element from a SIMD vector register is used as a scalar operand, this is indicated by

appending a constant, zero-based “element index” to the vector register name, inside square brackets. The

number of lanes is not represented, since it is not encoded, and may only be inferred from the index value.

Size (bits) 8b 16b 32b 64b

Name Vn.B[i] Vn.H[i] Vn.S[i] Vn.D[i]

However an assembler shall accept a fully qualified SIMD vector register name as in §4.4.2.2, so long as the

number of lanes is greater than the index value. For example the following forms will both be accepted by an

assembler as the name for the 32-bit element in bits <63:32> of SIMD register 9:

V9.S[1] standard disassembly

V9.2S[1] optional number of lanes

V9.4S[1] optional number of lanes

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 19 of 112

Note that the vector register element name Vn.S[0] is not equivalent to the scalar register name Sn.

Although they represent the same bits in the register, they select different instruction encoding forms, i.e.

vector element vs scalar form.

4.4.2.4 SIMD vector register list

Where an instruction operates on a “list” of vector registers – for example vector load-store and table lookup –

the registers are specified as a list within curly braces. This list consists of either a sequence of registers

separated by commas, or a register range separated by a hyphen. The registers must be numbered in

increasing order (modulo 32), in increments of one or two. The hyphenated form is preferred for disassembly

if there are more than two registers in the list, and the register numbers are monotonically increasing in

increments of one. The following are equivalent representations of a set of four registers V4 to V7, each

holding four lanes of 32-bit elements:

{V4.4S – V7.4S} standard disassembly

{V4.4S, V5.4S, V6.4S, V7.4S} alternative representation

4.4.2.5 SIMD vector element list

It is also possible for registers in a list to have a vector element form, for example LD4 loading one element

into each of four registers, in which case the index is appended to the list, as follows:

{V4.S - V7.S}[3] standard disassembly

{V4.4S, V5.4S, V6.4S, V7.4S}[3] alternative with optional number of lanes

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 20 of 112

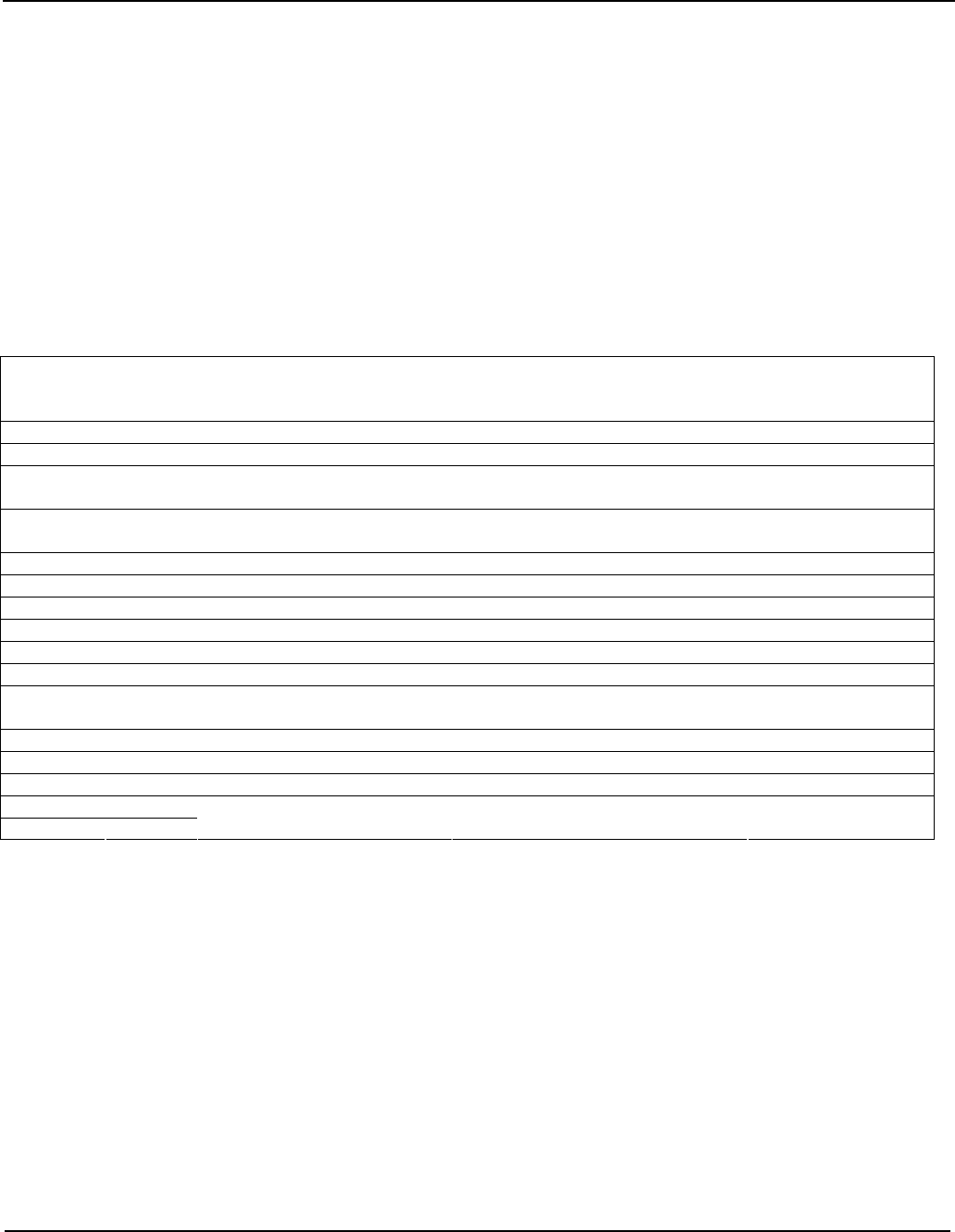

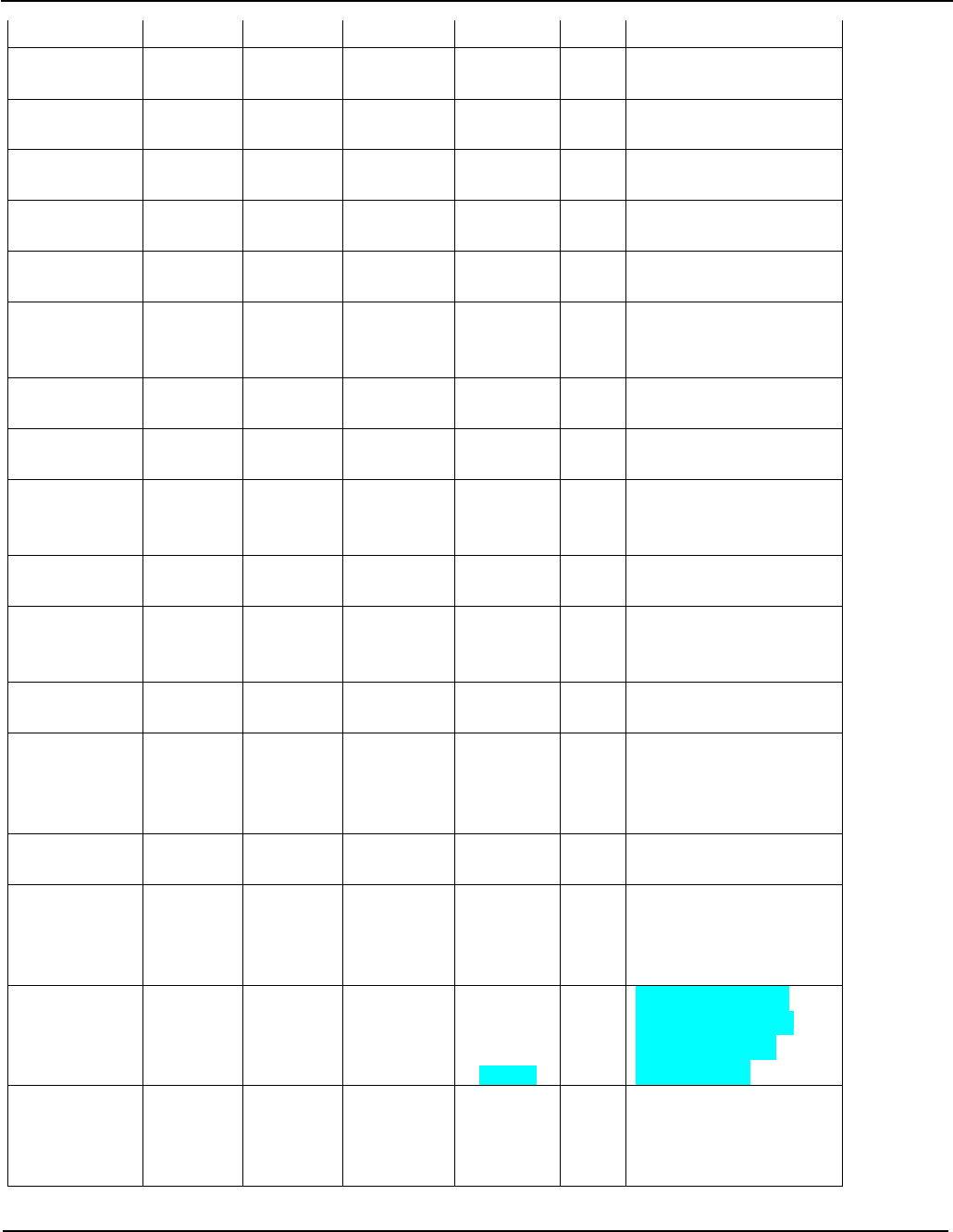

4.5 Load/Store Addressing Modes

Load/store addressing modes in the A64 instruction set broadly follows T32 consisting of a 64-bit base register

(Xn or SP) plus an immediate or register offset.

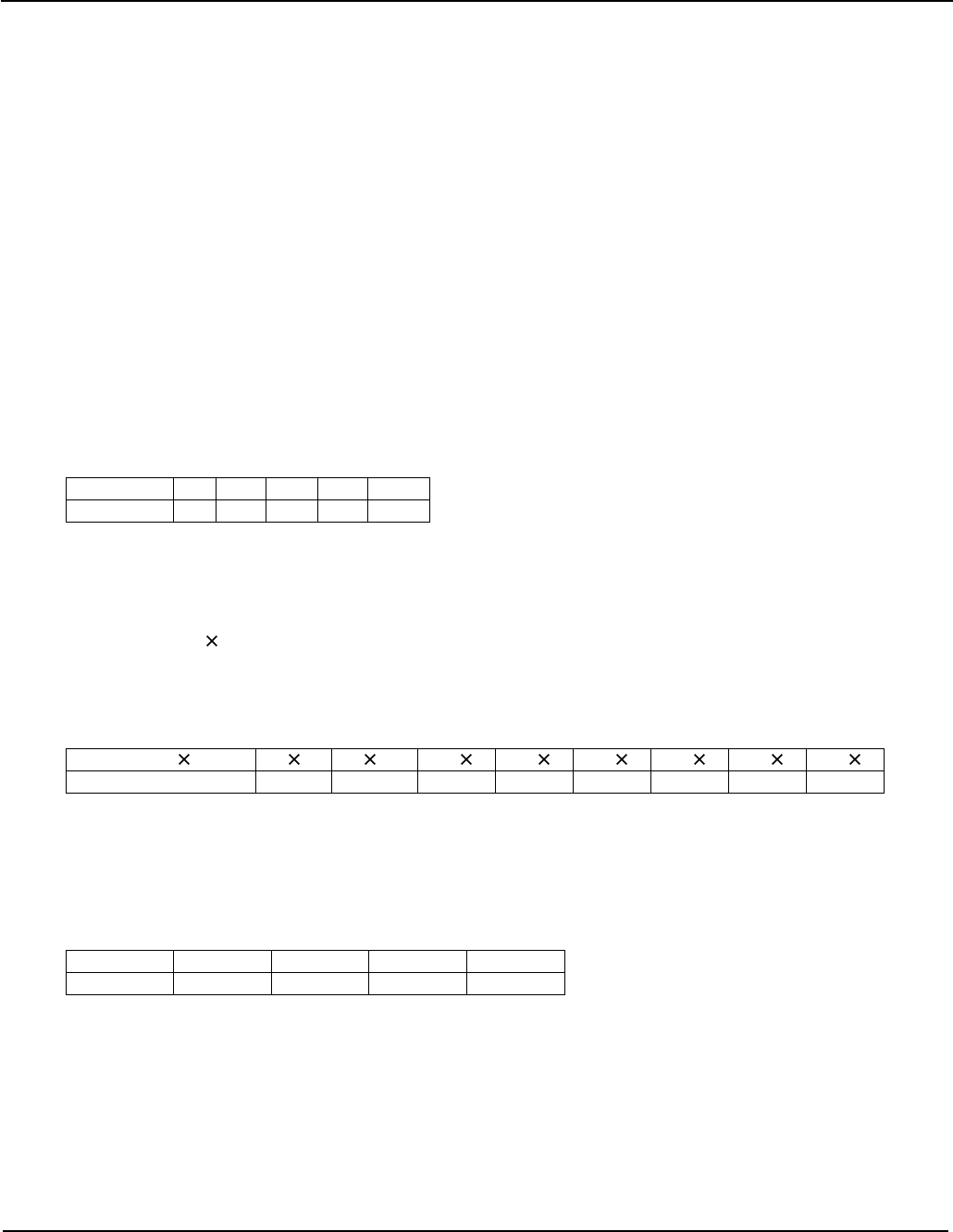

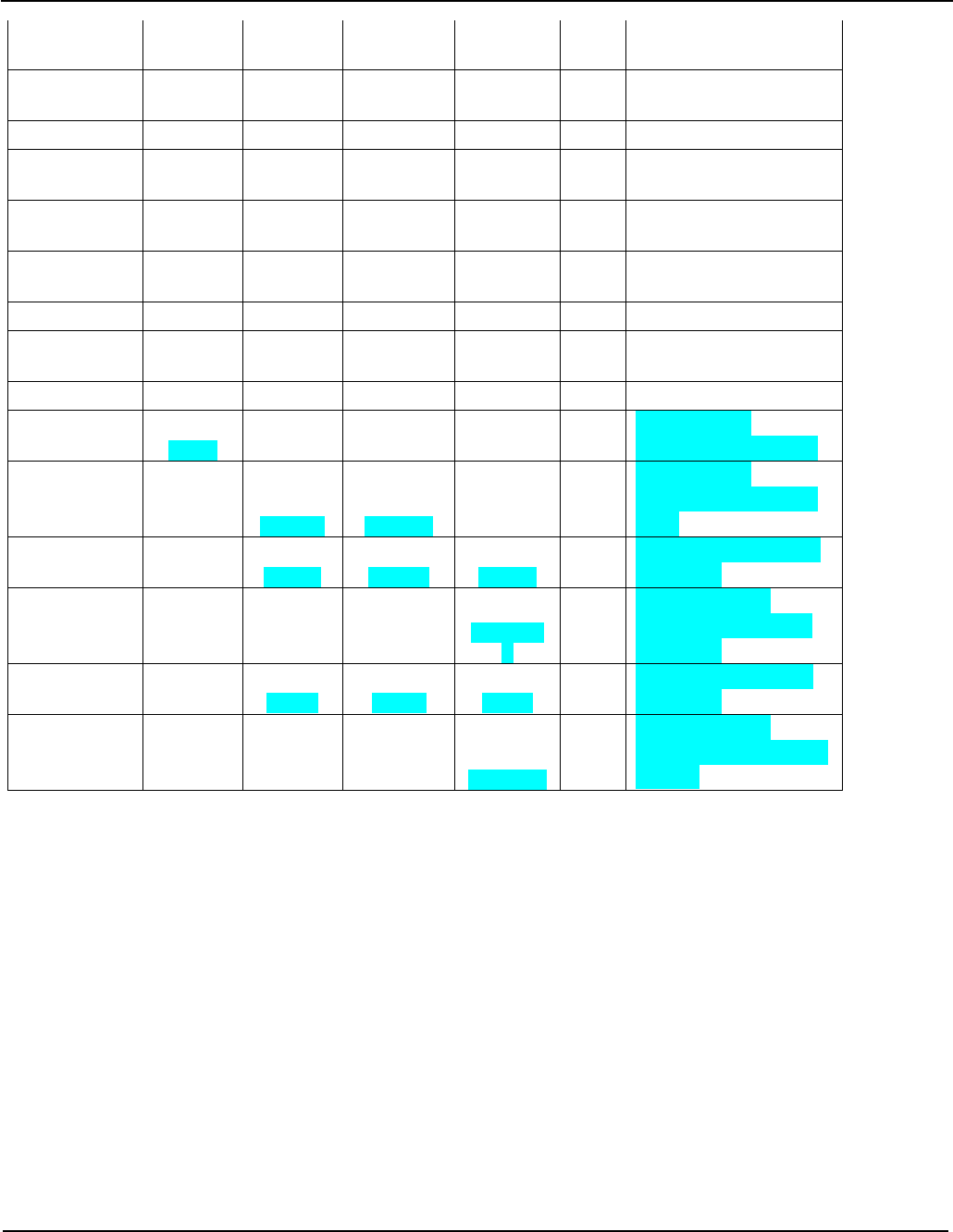

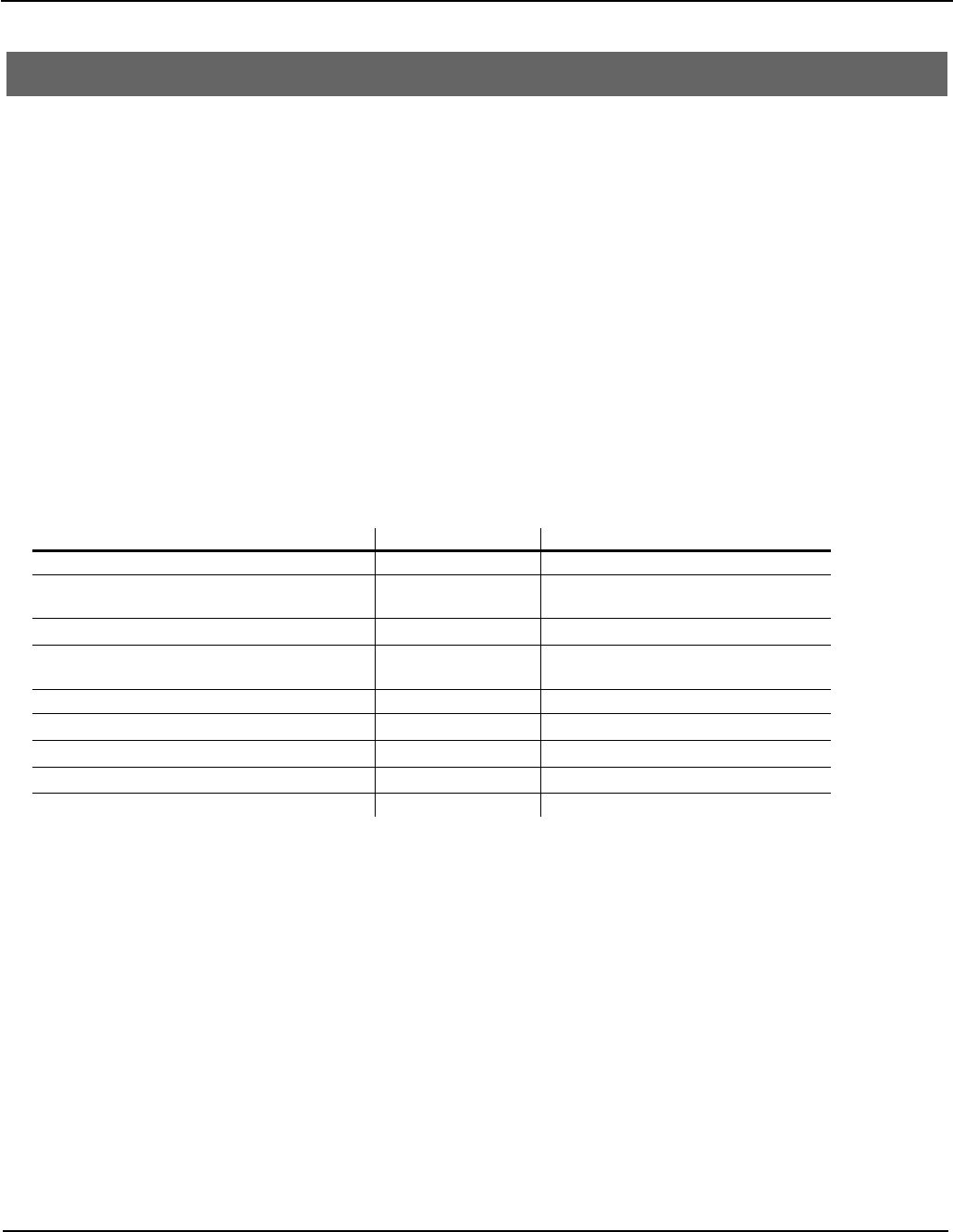

Type Immediate Offset

Register Offset Extended Register Offset

Simple register (exclusive) [base{,#0}] n/a n/a

Offset [base{,#imm}] [base,Xm{,LSL #imm}] [base,Wm,(S|U)XTW {#imm}]

Pre-indexed [base,#imm]! n/a n/a

Post-indexed [base],#imm n/a n/a

PC-relative (literal) load label n/a n/a

• An immediate offset is encoded in various ways, depending on the type of load/store instruction:

Bits Sign Scaling Write-

back? Load/Store Type

0 -

- - exclusive / acquire / release

7 signed

scaled option register pair

9 signed

unscaled option single register

12 unsigned

scaled no single register

• Where an immediate offset is scaled, it is encoded as a multiple of the data access size (except PC-

relative loads, where it is always a word multiple). The assembler always accepts a byte offset, which is

converted to the scaled offset for encoding, and a disassembler decodes the scaled offset encoding and

displays it as a byte offset. The range of byte offsets supported therefore varies according to the type of

load/store instruction and the data access size.

• The "post-indexed" forms mean that the memory address is the base register value, then base plus

offset is written back to the base register.

• The "pre-indexed" forms mean that the memory address is the base register value plus offset, then the

computed address is written back to the base register.

• A “register offset” means that the memory address is the base register value plus the value of 64-bit index

register Xm optionally scaled by the access size (in bytes), i.e. shifted left by log2(size).

• An “extended register offset” means that the memory address is the base register value plus the value of

32-bit index register Wm, sign or zero extended to 64 bits, then optionally scaled by the access size.

• An assembler should accept Xm as an extended index register, though Wm is preferred.

• The pre/post-indexed forms are not available with a register offset.

• There is no "down" option, so subtraction from the base register requires a negative signed immediate

offset (two's complement) or a negative value in the index register.

• When the base register is SP the stack pointer is required to be quadword (16 byte, 128 bit) aligned prior

to the address calculation and write-backs – misalignment will cause a stack alignment fault. The stack

pointer may not be used as an index register.

• Use of the program counter (PC) as a base register is implicit in literal load instructions and not permitted

in other load or store instructions. Literal loads do not include byte and halfword forms. See section 5

below for the definition of label.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 21 of 112

5 A64 INSTRUCTION SET

.The following syntax terms are used frequently throughout the A64 instruction set description. See also the

syntax notation described in section 2 above.

Xn Unless otherwise indicated a general register operand Xn or Wn interprets register 31 as the zero

register, represented by the names XZR or WZR respectively.

Xn|SP A general register operand of the form Xn|SP or Wn|WSP interprets register 31 as the stack pointer,

represented by the names SP or WSP respectively.

cond A standard ARM condition EQ, NE, CS|HS, CC|LO, MI, PL, VS, VC, HI, LS, GE, LT, GT,

LE, AL or NV with the same meanings as in AArch32. Note that although AL and NV represent different

encodings, as in AArch32 they are both interpreted as the “always true” condition. Unless stated

AArch64 instructions do not set or use the condition flags, but those that do set all of the condition flags.

If used in a pseudo-code expression this symbol represents a Boolean whose value is the truth of the

specified condition test.

invert(cond)

The inverse of cond, for example the inverse of GT is LE.

uimmn An n-bit unsigned (positive) immediate value.

simmn An n-bit two's complement signed immediate value (where n includes the sign bit).

label Represents a pc-relative reference from an instruction to a target code or data location. The precise

syntax is likely to be specific to individual toolchains, but the preferred form is “pcsym” or “pcsym±offs”,

where pcsym is:

a. The preferred architectural notation which is (at the choice of the disassembler) the character ‘.’

or string “{pc}” representing the referencing instruction’s address or offset.

b. For a programmers’ view where the instruction’s address in memory or offset within a

relocatable image is known and a list of symbols is available, then the symbol name whose

value is nearest to, and preferably less than or equal to the target location’s address or offset.

c. For a programmers’ view where the instruction’s address or offset is known but a list of symbols

is not available, then the target address or offset as a hexadecimal constant.

And where in all cases “±offs” gives the byte offset from pcsym to the target location’s address or

offset, which may be omitted if the offset is zero.

addr Represents an addressing mode that is some subset (documented for each class of instruction) of the

addressing modes in section 4.5 above.

lshift Represents an optional shift operator performed on the final source operand of a logical instruction,

taking chosen from LSL, LSR, ASR, or ROR, followed by a constant shift amount #imm in the range 0 to

regwidth-1. If omitted the default is “LSL #0”.

ashift Represents an optional shift operator to be performed on the final source operand of an arithmetic

instruction chosen from LSL, LSR, or ASR, followed by a constant shift amount #imm in the range 0 to

regwidth-1. If omitted the default is “LSL #0”.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 22 of 112

5.1 Control Flow

5.1.1 Conditional Branch

Unless stated, conditional branches have a branch offset range of ±1MiB from the program counter.

B.cond label

Branch: conditionally jumps to program-relative label if cond is true.

CBNZ Wn, label

Compare and Branch Not Zero: conditionally jumps to program-relative label if Wn is not equal to zero.

CBNZ Xn, label

Compare and Branch Not Zero (extended): conditionally jumps to label if Xn is not equal to zero.

CBZ Wn, label

Compare and Branch Zero: conditionally jumps to label if Wn is equal to zero.

CBZ Xn, label

Compare and Branch Zero (extended): conditionally jumps to label if Xn is equal to zero.

TBNZ Xn|Wn, #uimm6, label

Test and Branch Not Zero: conditionally jumps to label if bit number uimm6 in register Xn is not zero.

The bit number implies the width of the register, which may be written and should be disassembled as Wn

if uimm is less than 32. Limited to a branch offset range of ±32KiB.

TBZ Xn|Wn, #uimm6, label

Test and Branch Zero: conditionally jumps to label if bit number uimm6 in register Xn is zero. The bit

number implies the width of the register, which may be written and should be disassembled as Wn if

uimm6 is less than 32. Limited to a branch offset range of ±32KiB.

5.1.2 Unconditional Branch (immediate)

Unconditional branches support an immediate branch offset range of ±128MiB.

B label

Branch: unconditionally jumps to pc-relative label.

BL label

Branch and Link: unconditionally jumps to pc-relative label, writing the address of the next sequential

instruction to register X30.

5.1.3 Unconditional Branch (register)

BLR Xm

Branch and Link Register: unconditionally jumps to address in Xm, writing the address of the next

sequential instruction to register X30.

BR Xm

Branch Register: jumps to address in Xm, with a hint to the CPU that this is not a subroutine return.

RET {Xm}

Return: jumps to register Xm, with a hint to the CPU that this is a subroutine return. An assembler shall

default to register X30 if Xm is omitted.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 23 of 112

5.2 Memory Access

Aside from exclusive and explicitly ordered loads and stores, addresses may have arbitrary alignment unless strict

alignment checking is enabled (SCTLR.A==1). However if SP is used as the base register then the value of the

stack pointer prior to adding any offset must be quadword (16 byte) aligned, or else a stack alignment exception

will be generated.

A memory read or write generated by the load or store of a single general-purpose register aligned to the size of

the transfer is atomic. Memory reads or writes generated by the non-exclusive load or store of a pair of general-

purpose registers aligned to the size of the register are treated as two atomic accesses, one for each register. In

all other cases, unless otherwise stated, there are no atomicity guarantees.

5.2.1 Load-Store Single Register

The most general forms of load-store support a variety of addressing modes, consisting of base register Xn or SP,

plus one of:

• Scaled, 12-bit, unsigned immediate offset, without pre- and post-index options.

• Unscaled, 9-bit, signed immediate offset with pre- or post-index writeback.

• Scaled or unscaled 64-bit register offset.

• Scaled or unscaled 32-bit extended register offset.

If a Load instruction specifies writeback and the register being loaded is also the base register, then one of the

following behaviours can occur:

• The instruction is

UNALLOCATED

• The instruction is treated as a NOP

• The instruction performs the load using the specified addressing mode and the base register becomes

UNKNOWN. In addition, if an exception occurs during such an instruction, the base address might be

corrupted such that the instruction cannot be repeated.

If a Store instruction performs a writeback and the register being stored is also the base register, then one of the

following behaviours can occur:

• The instruction is

UNALLOCATED

• The instruction is treated as a NOP

• The instruction performs the stores of the register specified using the specified addressing mode but the

value stored is UNKNOWN

LDR Wt, addr

Load Register: loads a word from memory addressed by addr to Wt.

LDR Xt, addr

Load Register (extended): loads a doubleword from memory addressed by addr to Xt.

LDRB Wt, addr

Load Byte: loads a byte from memory addressed by addr, then zero-extends it to Wt.

LDRSB Wt, addr

Load Signed Byte: loads a byte from memory addressed by addr, then sign-extends it into Wt.

LDRSB Xt, addr

Load Signed Byte (extended): loads a byte from memory addressed by addr, then sign-extends it into Xt.

LDRH Wt, addr

Load Halfword: loads a halfword from memory addressed by addr, then zero-extends it into Wt.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 24 of 112

LDRSH Wt, addr

Load Signed Halfword: loads a halfword from memory addressed by addr, then sign-extends it into Wt.

LDRSH Xt, addr

Load Signed Halfword (extended): loads a halfword from memory addressed by addr, then sign-extends

it into Xt.

LDRSW Xt, addr

Load Signed Word (extended): loads a word from memory addressed by addr, then sign-extends it into

Xt.

STR Wt, addr

Store Register: stores word from Wt to memory addressed by addr.

STR Xt, addr

Store Register (extended): stores doubleword from Xt to memory addressed by addr.

STRB Wt, addr

Store Byte: stores byte from Wt to memory addressed by addr.

STRH Wt, addr

Store Halfword: stores halfword from Wt to memory addressed by addr.

5.2.2 Load-Store Single Register (unscaled offset)

The load-store single register (unscaled offset) instructions support an addressing mode of base register Xn or

SP, plus:

• Unscaled, 9-bit, signed immediate offset, without pre- and post-index options

These instructions use unique mnemonics to distinguish them from normal load-store instructions due to the

overlap of functionality with the scaled 12-bit unsigned immediate offset addressing mode when the offset is

positive and naturally aligned.

A programmer-friendly assembler could generate these instructions in response to the standard LDR/STR

mnemonics when the immediate offset is unambiguous, i.e. when it is negative or unaligned. Similarly a

disassembler could display these instructions using the standard LDR/STR mnemonics when the encoded

immediate is negative or unaligned. However this behaviour is not required by the architectural assembly

language.

LDUR Wt, [base,#simm9]

Load (Unscaled) Register: loads a word from memory addressed by base+simm9 to Wt.

LDUR Xt, [base,#simm9]

Load (Unscaled) Register (extended): loads a doubleword from memory addressed by base+simm9 to

Xt.

LDURB Wt, [base,#simm9]

Load (Unscaled) Byte: loads a byte from memory addressed by base+simm9, then zero-extends it into

Wt.

LDURSB Wt, [base,#simm9]

Load (Unscaled) Signed Byte: loads a byte from memory addressed by base+simm9, then sign-extends it

into Wt.

LDURSB Xt, [base,#simm9]

Load (Unscaled) Signed Byte (extended): loads a byte from memory addressed by base+simm9, then

sign-extends it into Xt.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 25 of 112

LDURH Wt, [base,#simm9]

Load (Unscaled) Halfword: loads a halfword from memory addressed by base+simm9, then zero-extends

it into Wt.

LDURSH Wt, [base,#simm9]

Load (Unscaled) Signed Halfword: loads a halfword from memory addressed by base+simm9, then sign-

extends it into Wt.

LDURSH Xt, [base,#simm9]

Load (Unscaled) Signed Halfword (extended): loads a halfword from memory addressed by base+simm9,

then sign-extends it into Xt.

LDURSW Xt, [base,#simm9]

Load (Unscaled) Signed Word (extended): loads a word from memory addressed by base+simm9, then

sign-extends it into Xt.

STUR Wt, [base,#simm9]

Store (Unscaled) Register: stores word from Wt to memory addressed by base+simm9.

STUR Xt, [base,#simm9]

Store (Unscaled) Register (extended): stores doubleword from Xt to memory addressed by base+simm9.

STURB Wt, [base,#simm9]

Store (Unscaled) Byte: stores byte from Wt to memory addressed by base+simm9.

STURH Wt, [base,#simm9]

Store (Unscaled) Halfword: stores halfword from Wt to memory addressed by base+simm9.

5.2.3 Load Single Register (pc-relative, literal load)

The pc-relative address from which to load is encoded as a 19-bit signed word offset which is shifted left by 2 and

added to the program counter, giving access to any word-aligned location within ±1MiB of the PC.

As a convenience assemblers will typically permit the notation “=value” in conjunction with the pc-relative literal

load instructions to automatically place an immediate value or symbolic address in a nearby literal pool and

generate a hidden label which references it. But that syntax is not architectural and will never appear in a

disassembly. A64 has other instructions to construct immediate values (section 5.3.3) and addresses (section

5.3.4) in a register which may be preferable to loading them from a literal pool.

LDR Wt, label | =value

Load Literal Register (32-bit): loads a word from memory addressed by label to Wt.

LDR Xt, label | =value

Load Literal Register (64-bit): loads a doubleword from memory addressed by label to Xt.

LDRSW Xt, label | =value

Load Literal Signed Word (extended): loads a word from memory addressed by label, then sign-extends

it into Xt.

5.2.4 Load-Store Pair

The load-store pair instructions support an addressing mode consisting of base register Xn or SP, plus:

• Scaled 7-bit signed immediate offset, with pre- and post-index writeback options

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 26 of 112

If a Load Pair instruction specifies the same register for the two registers that are being loaded, then one of the

following behaviours can occur:

• The instruction is

UNALLOCATED

• The instruction is treated as a NOP

• The instruction performs all of the loads using the specified addressing mode and the register being

loaded takes an UNKNOWN value

If a Load Pair instruction specifies writeback and one of the registers being loaded is also the base register, then

one of the following behaviours can occur:

• The instruction is

UNALLOCATED

• The instruction is treated as a NOP

• The instruction performs all of the loads using the specified addressing mode and the base register

becomes UNKNOWN. In addition, if an exception occurs during such an instruction, the base address might

be corrupted such that the instruction cannot be repeated.

If a Store Pair instruction performs a writeback and one of the registers being stored is also the base register, then

one of the following behaviours can occur:

• The instruction is

UNALLOCATED

• The instruction is treated as a NOP

• The instruction performs all of the stores of the registers specified using the specified addressing mode

but the value stored for the base register is UNKNOWN

LDP Wt1, Wt2, addr

Load Pair Registers: loads two words from memory addressed by addr to Wt1 and Wt2.

LDP Xt1, Xt2, addr

Load Pair Registers (extended): loads two doublewords from memory addressed by addr to Xt1 and

Xt2.

LDPSW Xt1, Xt2, addr

Load Pair Signed Words (extended) loads two words from memory addressed by addr, then sign-extends

them into Xt1 and Xt2.

STP Wt1, Wt2, addr

Store Pair Registers: stores two words from Wt1 and Wt2 to memory addressed by addr.

STP Xt1, Xt2, addr

Store Pair Registers (extended): stores two doublewords from Xt1 and Xt2 to memory addressed by

addr.

5.2.5 Load-Store Non-temporal Pair

The LDNP and STNP non-temporal pair instructions provide a hint to the memory system that an access is “non-

temporal” or “streaming” and unlikely to be accessed again in the near future so need not be retained in data

caches. However depending on the memory type they may permit memory reads to be preloaded and memory

writes to be gathered, in order to accelerate bulk memory transfers.

Furthermore, as a special exception to the normal memory ordering rules, where an address dependency exists

between two memory reads and the second read was generated by a Load Non-temporal Pair instruction then, in

the absence of any other barrier mechanism to achieve order, those memory accesses can be observed in any

order by other observers within the shareability domain of the memory addresses being accessed.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 27 of 112

The LDNP and STNP instructions support an addressing mode of base register Xn or SP, plus:

• Scaled 7-bit signed immediate offset, without pre- and post-index options

If a Load Non-temporal Pair instruction specifies the same register for the two registers that are being loaded, then

one of the following behaviours can occur:

• The instruction is

UNALLOCATED

• The instruction is treated as a NOP

• The instruction performs all of the loads using the specified addressing mode and the register being

loaded takes an UNKNOWN value

LDNP Wt1, Wt2, [base,#imm]

Load Non-temporal Pair: loads two words from memory addressed by base+imm to Wt1 and Wt2, with a

non-temporal hint.

LDNP Xt1, Xt2, [base,#imm]

Load Non-temporal Pair (extended): loads two doublewords from memory addressed by base+imm to

Xt1 and Xt2, with a non-temporal hint.

STNP Wt1, Wt2, [base,#imm]

Store Non-temporal Pair: stores two words from Wt1 and Wt2 to memory addressed by base+imm, with a

non-temporal hint.

STNP Xt1, Xt2, [base,#imm]

Store Non-temporal Pair (extended): stores two doublewords from Xt1 and Xt2 to memory addressed by

base+imm, with a non-temporal hint.

5.2.6 Load-Store Unprivileged

The load-store unprivileged instructions may be used when the processor is at the EL1 exception level to perform

a memory access as if it were at the EL0 (unprivileged) exception level. If the processor is at any other exception

level, then a normal memory access for that level is performed. (The letter ‘T’ in these mnemonics is based on an

historical ARM convention which described an access to an unprivileged virtual address as being “translated”).

The load-store unprivileged instructions support an addressing mode of base register Xn or SP, plus:

• Unscaled, 9-bit, signed immediate offset, without pre- and post-index options

LDTR Wt, [base,#simm9]

Load Unprivileged Register: loads word from memory addressed by base+simm9 to Wt, using EL0

privileges when at EL1.

LDTR Xt, [base,#simm9]

Load Unprivileged Register (extended): loads doubleword from memory addressed by base+simm9 to

Xt, using EL0 privileges when at EL1.

LDTRB Wt, [base,#simm9]

Load Unprivileged Byte: loads a byte from memory addressed by base+simm9, then zero-extends it into

Wt, using EL0 privileges when at EL1.

LDTRSB Wt, [base,#simm9]

Load Unprivileged Signed Byte: loads a byte from memory addressed by base+simm9, then sign-extends

it into Wt, using EL0 privileges when at EL1.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 28 of 112

LDTRSB Xt, [base,#simm9]

Load Unprivileged Signed Byte (extended): loads a byte from memory addressed by base+simm9, then

sign-extends it into Xt, using EL0 privileges when at EL1.

LDTRH Wt, [base,#simm9]

Load Unprivileged Halfword: loads a halfword from memory addressed by base+simm9, then zero-

extends it into Wt, using EL0 privileges when at EL1.

LDTRSH Wt, [base,#simm9]

Load Unprivileged Signed Halfword: loads a halfword from memory addressed by base+simm9, then

sign-extends it into Wt, using EL0 privileges when at EL1.

LDTRSH Xt, [base,#simm9]

Load Unprivileged Signed Halfword (extended): loads a halfword from memory addressed by

base+simm9, then sign-extends it into Xt, using EL0 privileges when at EL1.

LDTRSW Xt, [base,#simm9]

Load Unprivileged Signed Word (extended): loads a word from memory addressed by base+simm9, then

sign-extends it into Xt, using EL0 privileges when at EL1.

STTR Wt, [base,#simm9]

Store Unprivileged Register: stores a word from Wt to memory addressed by base+simm9, using EL0

privileges when at EL1.

STTR Xt, [base,#simm9]

Store Unprivileged Register (extended): stores a doubleword from Xt to memory addressed by

base+simm9, using EL0 privileges when at EL1.

STTRB Wt, [base,#simm9]

Store Unprivileged Byte: stores a byte from Wt to memory addressed by base+simm9, using EL0

privileges when at EL1.

STTRH Wt, [base,#simm9]

Store Unprivileged Halfword: stores a halfword from Wt to memory addressed by base+simm9, using

EL0 privileges when at EL1.

5.2.7 Load-Store Exclusive

The load exclusive instructions mark the accessed physical address being accessed as an exclusive access,

which is checked by the store exclusive, permitting the construction of “atomic” read-modify-write operations on

shared memory variables, semaphores, mutexes, spinlocks, etc.

The load-store exclusive instructions support a simple addressing mode of base register Xn or SP only. An

optional offset of #0 must be accepted by the assembler, but may be omitted on disassembly.

Natural alignment is required: an unaligned address will cause an alignment fault. A memory access generated by

a load exclusive pair or store exclusive pair must be aligned to the size of the pair, and when a store exclusive pair

succeeds it will cause a single-copy atomic update of the entire memory location.

LDXR Wt, [base{,#0}]

Load Exclusive Register: loads a word from memory addressed by base to Wt. Records the physical

address as an exclusive access.

LDXR Xt, [base{,#0}]

Load Exclusive Register (extended): loads a doubleword from memory addressed by base to Xt.

Records the physical address as an exclusive access.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 29 of 112

LDXRB Wt, [base{,#0}]

Load Exclusive Byte: loads a byte from memory addressed by base, then zero-extends it into Wt.

Records the physical address as an exclusive access.

LDXRH Wt, [base{,#0}]

Load Exclusive Halfword: loads a halfword from memory addressed by base, then zero-extends it into

Wt. Records the physical address as an exclusive access.

LDXP Wt, Wt2, [base{,#0}]

Load Exclusive Pair Registers: loads two words from memory addressed by base, and to Wt and Wt2.

Records the physical address as an exclusive access.

LDXP Xt, Xt2, [base{,#0}]

Load Exclusive Pair Registers (extended): loads two doublewords from memory addressed by base to

Xt and Xt2. Records the physical address as an exclusive access.

STXR Ws, Wt, [base{,#0}]

Store Exclusive Register: stores word from Wt to memory addressed by base, and sets Ws to the returned

exclusive access status.

STXR Ws, Xt, [base{,#0}]

Store Exclusive Register (extended): stores doubleword from Xt to memory addressed by base, and sets

Ws to the returned exclusive access status.

STXRB Ws, Wt, [base{,#0}]

Store Exclusive Byte: stores byte from Wt to memory addressed by base, and sets Ws to the returned

exclusive access status.

STXRH Ws, Wt, [base{,#0}]

Store Exclusive Halfword: stores halfword from Xt to memory addressed by base, and sets Ws to the

returned exclusive access status.

STXP Ws, Wt, Wt2, [base{,#0}]

Store Exclusive Pair: stores two words from Wt and Wt2 to memory addressed by base, and sets Ws to

the returned exclusive access status.

STXP Ws, Xt, Xt2, [base{,#0}]

Store Exclusive Pair (extended): stores two doublewords from Xt and Xt2 to memory addressed by

base, and sets Ws to the returned exclusive access status.

5.2.8 Load-Acquire / Store-Release

A load-acquire is a load where it is guaranteed that all loads and stores appearing in program order after the load-

acquire will be observed by each observer after that observer observes the load-acquire, but says nothing about

loads and stores appearing before the load-acquire.

A store-release will be observed by each observer after that observer observes any loads or stores that appear in

program order before the store-release, but says nothing about loads and stores appearing after the store-release.

In addition, a store-release followed by a load-acquire will be observed by each observer in program order.

A further consideration is that all store-release operations must be multi-copy atomic: that is, if one agent has

seen a store-release, then all agents have seen the store-release. There are no requirements for ordinary stores

to be multi-copy atomic.

The load-acquire and store-release instructions support the simple addressing mode of base register Xn or SP

only. An optional offset of #0 must be accepted by the assembler, but may be omitted on disassembly.

ARMv8 Instruction Set Overview

PRD03-GENC-010197 Copyright © 2009-2011 ARM Limited. All rights reserved. Page 30 of 112

Natural alignment is required: an unaligned address will cause an alignment fault.

5.2.8.1 Non-exclusive

LDAR Wt, [base{,#0}]

Load-Acquire Register: loads a word from memory addressed by base to Wt.

LDAR Xt, [base{,#0}]

Load-Acquire Register (extended): loads a doubleword from memory addressed by base to Xt.

LDARB Wt, [base{,#0}]

Load-Acquire Byte: loads a byte from memory addressed by base, then zero-extends it into Wt.

LDARH Wt, [base{,#0}]

Load-Acquire Halfword: loads a halfword from memory addressed by base, then zero-extends it into Wt.

STLR Wt, [base{,#0}]

Store-Release Register: stores a word from Wt to memory addressed by base.

STLR Xt, [base{,#0}]

Store-Release Register (extended): stores a doubleword from Xt to memory addressed by base.

STLRB Wt, [base{,#0}]

Store-Release Byte: stores a byte from Wt to memory addressed by base.

STLRH Wt, [base{,#0}]

Store-Release Halfword: stores a halfword from Wt to memory addressed by base.

5.2.8.2 Exclusive

LDAXR Wt, [base{,#0}]

Load-Acquire Exclusive Register: loads word from memory addressed by base to Wt. Records the

physical address as an exclusive access.

LDAXR Xt, [base{,#0}]

Load-Acquire Exclusive Register (extended): loads doubleword from memory addressed by base to Xt.

Records the physical address as an exclusive access.

LDAXRB Wt, [base{,#0}]

Load-Acquire Exclusive Byte: loads byte from memory addressed by base, then zero-extends it into Wt.

Records the physical address as an exclusive access.

LDAXRH Wt, [base{,#0}]

Load-Acquire Exclusive Halfword: loads halfword from memory addressed by base, then zero-extends it

into Wt. Records the physical address as an exclusive access.

LDAXP Wt, Wt2, [base{,#0}]

Load-Acquire Exclusive Pair Registers: loads two words from memory addressed by base to Wt and Wt2.

Records the physical address as an exclusive access.