Amazon Simple Storage Service Developer Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 623 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Amazon Simple Storage Service

- Table of Contents

- What Is Amazon S3?

- Introduction to Amazon S3

- Making Requests

- About Access Keys

- Request Endpoints

- Making Requests to Amazon S3 over IPv6

- Making Requests Using the AWS SDKs

- Making Requests Using AWS Account or IAM User Credentials

- Making Requests Using AWS Account or IAM User Credentials - AWS SDK for Java

- Making Requests Using AWS Account or IAM User Credentials - AWS SDK for .NET

- Making Requests Using AWS Account or IAM User Credentials - AWS SDK for PHP

- Making Requests Using AWS Account or IAM User Credentials - AWS SDK for Ruby

- Making Requests Using IAM User Temporary Credentials

- Making Requests Using Federated User Temporary Credentials

- Making Requests Using Federated User Temporary Credentials - AWS SDK for Java

- Making Requests Using Federated User Temporary Credentials - AWS SDK for .NET

- Making Requests Using Federated User Temporary Credentials - AWS SDK for PHP

- Making Requests Using Federated User Temporary Credentials - AWS SDK for Ruby

- Making Requests Using AWS Account or IAM User Credentials

- Making Requests Using the REST API

- Working with Amazon S3 Buckets

- Creating a Bucket

- Accessing a Bucket

- Bucket Configuration Options

- Bucket Restrictions and Limitations

- Examples of Creating a Bucket

- Deleting or Emptying a Bucket

- Managing Bucket Website Configuration

- Amazon S3 Transfer Acceleration

- Why Use Amazon S3 Transfer Acceleration?

- Getting Started with Amazon S3 Transfer Acceleration

- Requirements for Using Amazon S3 Transfer Acceleration

- Amazon S3 Transfer Acceleration Examples

- Requester Pays Buckets

- Buckets and Access Control

- Billing and Reporting of Buckets

- Working with Amazon S3 Objects

- Object Key and Metadata

- Storage Classes

- Object Subresources

- Object Versioning

- Object Lifecycle Management

- What Is Lifecycle Configuration?

- How Do I Configure a Lifecycle?

- Transitioning Objects: General Considerations

- Expiring Objects: General Considerations

- Lifecycle and Other Bucket Configurations

- Lifecycle Configuration Elements

- ID Element

- Status Element

- Prefix Element

- Elements to Describe Lifecycle Actions

- Examples of Lifecycle Configuration

- Example 1: Specify a Lifecycle Rule for a Subset of Objects in a Bucket

- Example 2: Specify a Lifecycle Rule that Applies to All Objects in the Bucket

- Example 3: Disable a Lifecycle Rule

- Example 4: Tiering Down Storage Class Over Object Lifetime

- Example 5: Specify Multiple Rules

- Example 6: Specify Multiple Rules with Overlapping Prefixes

- Example 7: Specify a Lifecycle Rule for a Versioning-Enable Bucket

- Example 8: Removing Expired Object Delete Markers

- GLACIER Storage Class: Additional Lifecycle Configuration Considerations

- Specifying a Lifecycle Configuration

- Cross-Origin Resource Sharing (CORS)

- Operations on Objects

- Getting Objects

- Uploading Objects

- Uploading Objects in a Single Operation

- Uploading Objects Using Multipart Upload API

- Multipart Upload Overview

- Using the AWS Java SDK for Multipart Upload (High-Level API)

- Using the AWS Java SDK for Multipart Upload (Low-Level API)

- Using the AWS .NET SDK for Multipart Upload (High-Level API)

- Using the AWS .NET SDK for Multipart Upload (Low-Level API)

- Using the AWS PHP SDK for Multipart Upload (High-Level API)

- Using the AWS PHP SDK for Multipart Upload (Low-Level API)

- Using the AWS SDK for Ruby for Multipart Upload

- Using the REST API for Multipart Upload

- Uploading Objects Using Pre-Signed URLs

- Copying Objects

- Listing Object Keys

- Deleting Objects

- Restoring Archived Objects

- Managing Access Permissions to Your Amazon S3 Resources

- Introduction to Managing Access Permissions to Your Amazon S3 Resources

- Overview of Managing Access

- How Amazon S3 Authorizes a Request

- Related Topics

- How Amazon S3 Authorizes a Request for a Bucket Operation

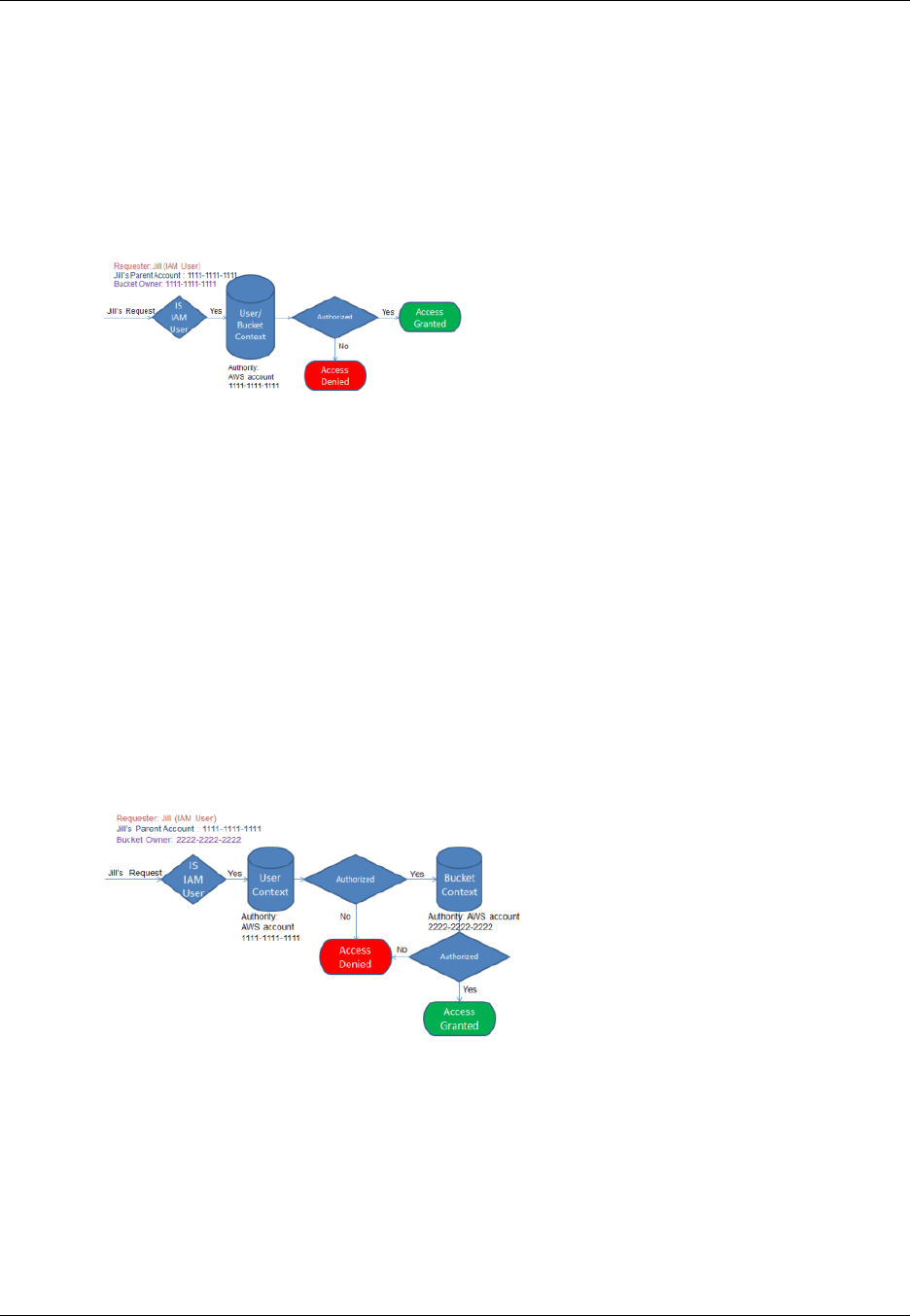

- Example 1: Bucket Operation Requested by Bucket Owner

- Example 2: Bucket Operation Requested by an AWS Account That Is Not the Bucket Owner

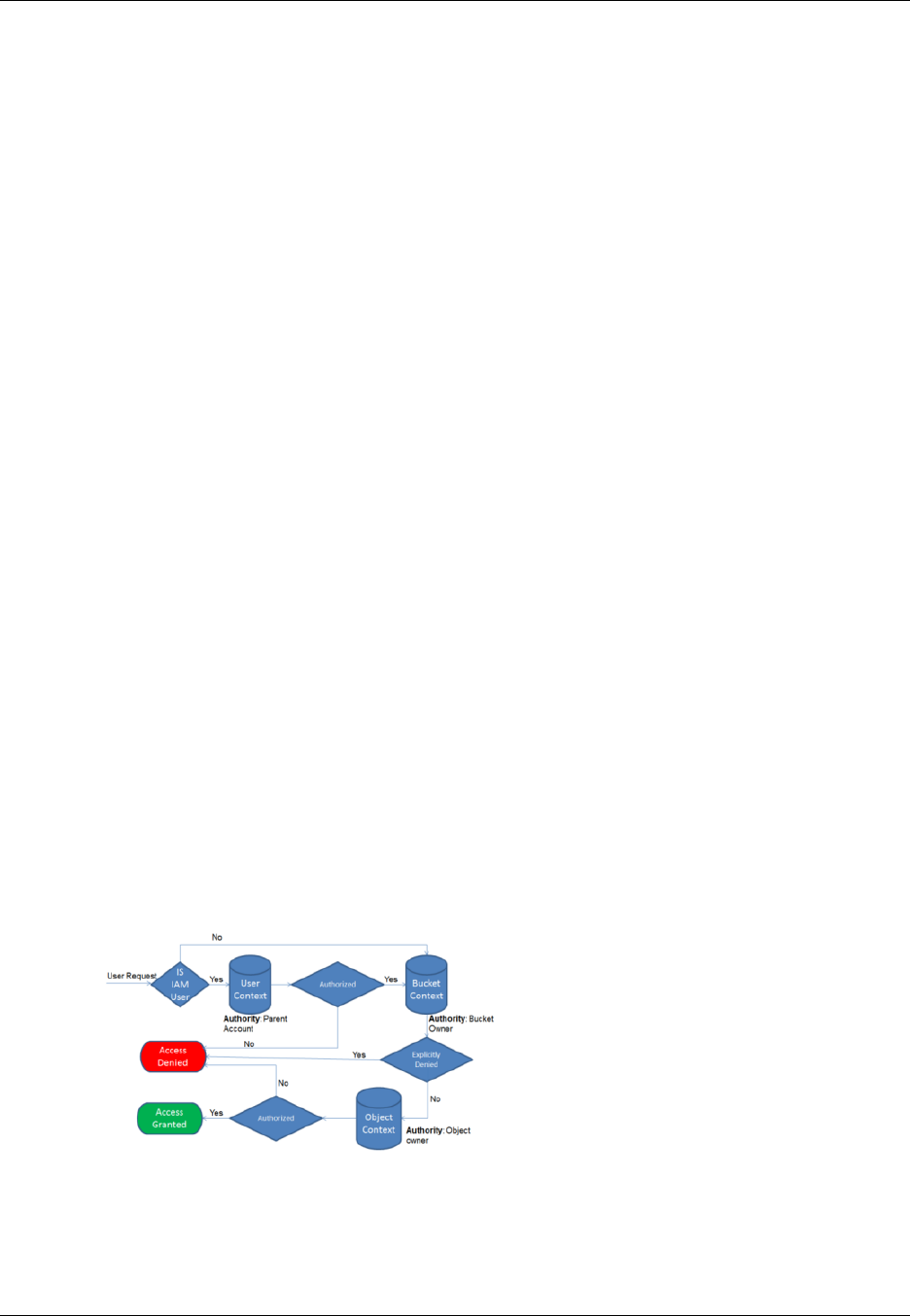

- Example 3: Bucket Operation Requested by an IAM User Whose Parent AWS Account Is Also the Bucket Owner

- Example 4: Bucket Operation Requested by an IAM User Whose Parent AWS Account Is Not the Bucket Owner

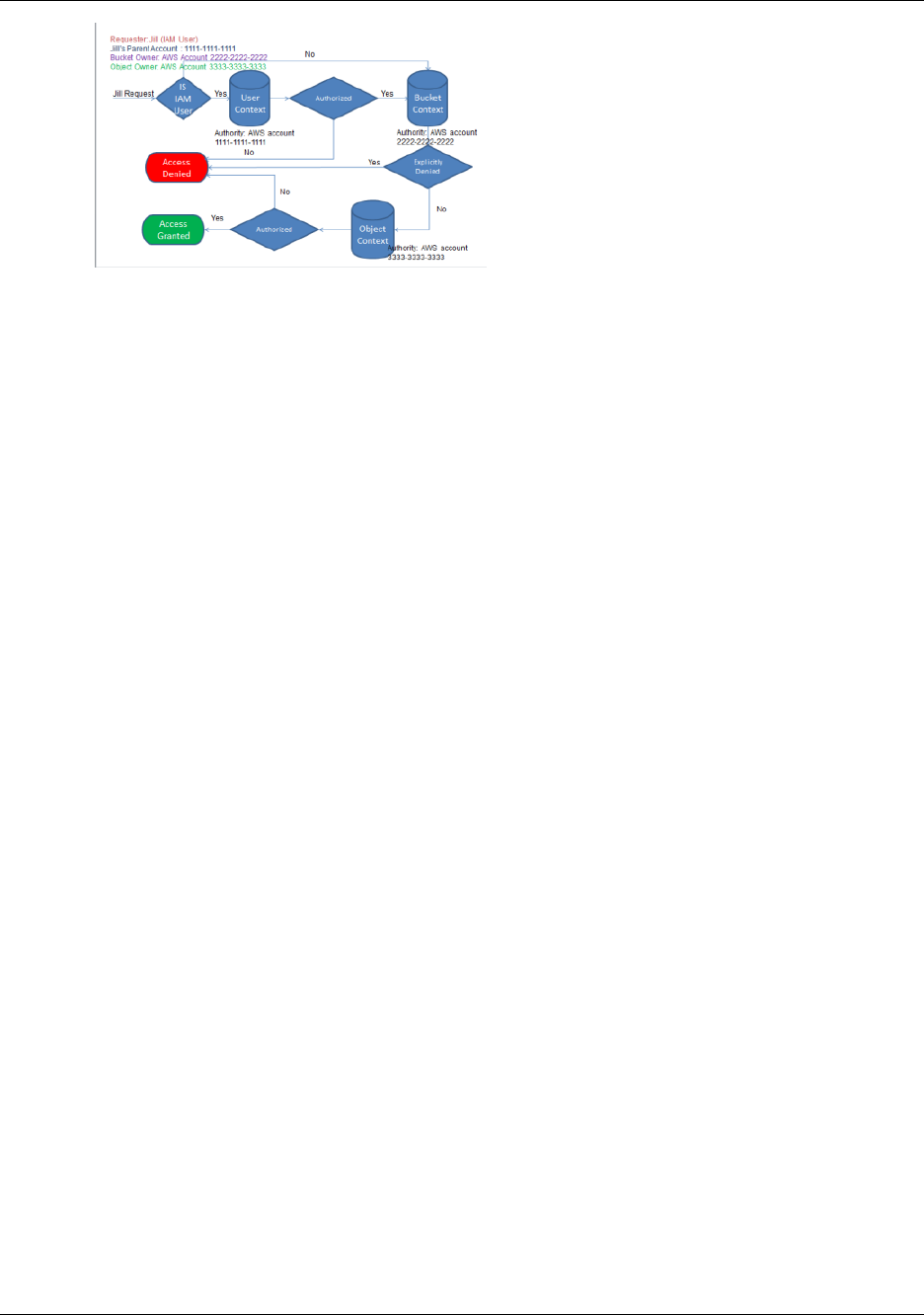

- How Amazon S3 Authorizes a Request for an Object Operation

- Guidelines for Using the Available Access Policy Options

- Example Walkthroughs: Managing Access to Your Amazon S3 Resources

- Before You Try the Example Walkthroughs

- Setting Up the Tools for the Example Walkthroughs

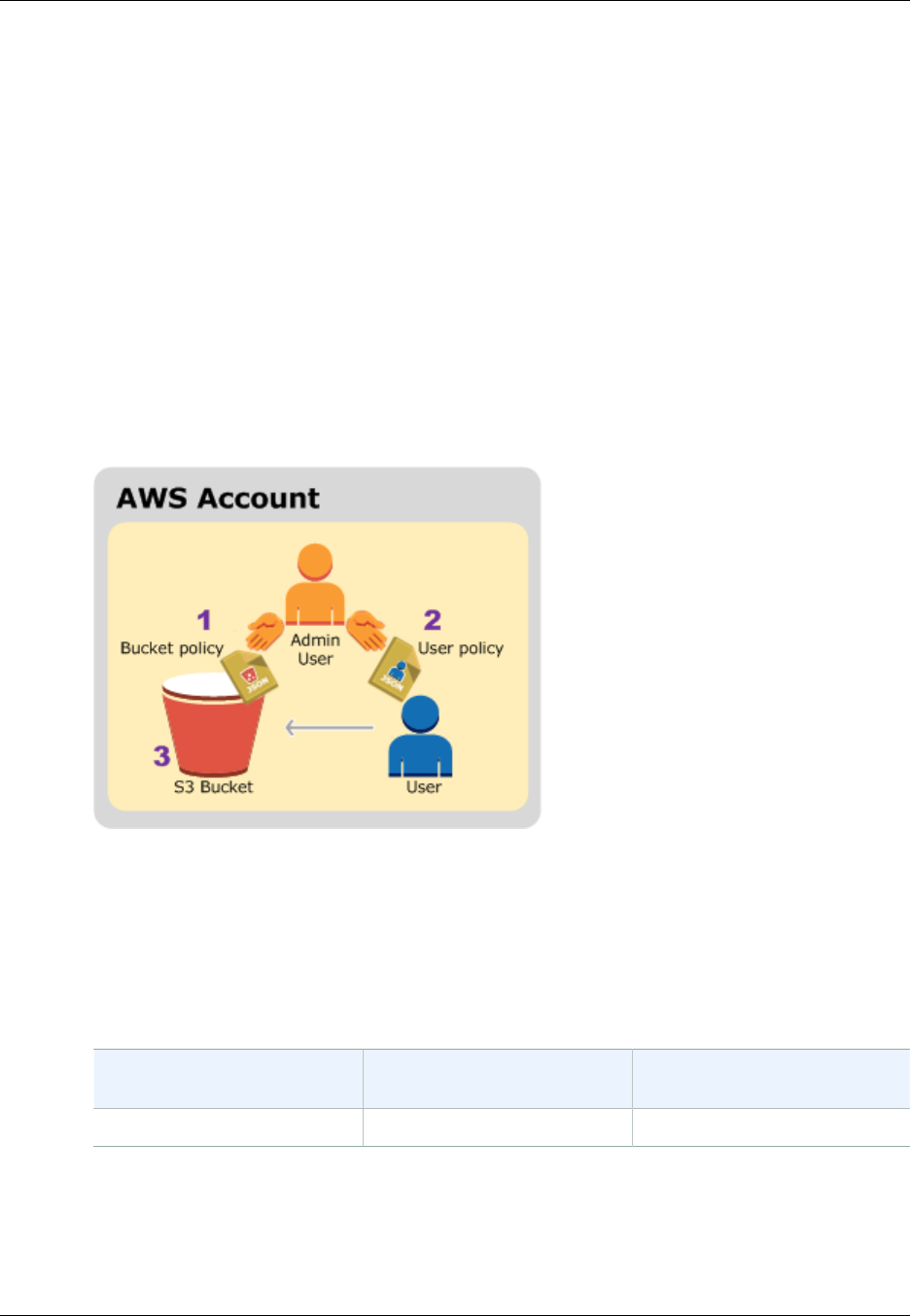

- Example 1: Bucket Owner Granting Its Users Bucket Permissions

- Example 2: Bucket Owner Granting Cross-Account Bucket Permissions

- Example 3: Bucket Owner Granting Its Users Permissions to Objects It Does Not Own

- Example 4: Bucket Owner Granting Cross-account Permission to Objects It Does Not Own

- Using Bucket Policies and User Policies

- Access Policy Language Overview

- Common Elements in an Access Policy

- Specifying Resources in a Policy

- Specifying a Principal in a Policy

- Specifying Permissions in a Policy

- Specifying Conditions in a Policy

- Available Condition Keys

- Amazon S3 Condition Keys for Object Operations

- Example 1: Granting s3:PutObject permission with a condition requiring the bucket owner to get full control

- Example 2: Granting s3:PutObject permission requiring objects stored using server-side encryption

- Example 3: Granting s3:PutObject permission to copy objects with a restriction on the copy source

- Example 4: Granting access to a specific version of an object

- Example 5: Restrict object uploads to objects with a specific storage class

- Amazon S3 Condition Keys for Bucket Operations

- Bucket Policy Examples

- Granting Permissions to Multiple Accounts with Added Conditions

- Granting Read-Only Permission to an Anonymous User

- Restricting Access to Specific IP Addresses

- Restricting Access to a Specific HTTP Referrer

- Granting Permission to an Amazon CloudFront Origin Identity

- Adding a Bucket Policy to Require MFA Authentication

- Granting Cross-Account Permissions to Upload Objects While Ensuring the Bucket Owner Has Full Control

- Example Bucket Policies for VPC Endpoints for Amazon S3

- User Policy Examples

- Example: Allow an IAM user access to one of your buckets

- Example: Allow each IAM user access to a folder in a bucket

- Example: Allow a group to have a shared folder in Amazon S3

- Example: Allow all your users to read objects in a portion of the corporate bucket

- Example: Allow a partner to drop files into a specific portion of the corporate bucket

- An Example Walkthrough: Using user policies to control access to your bucket

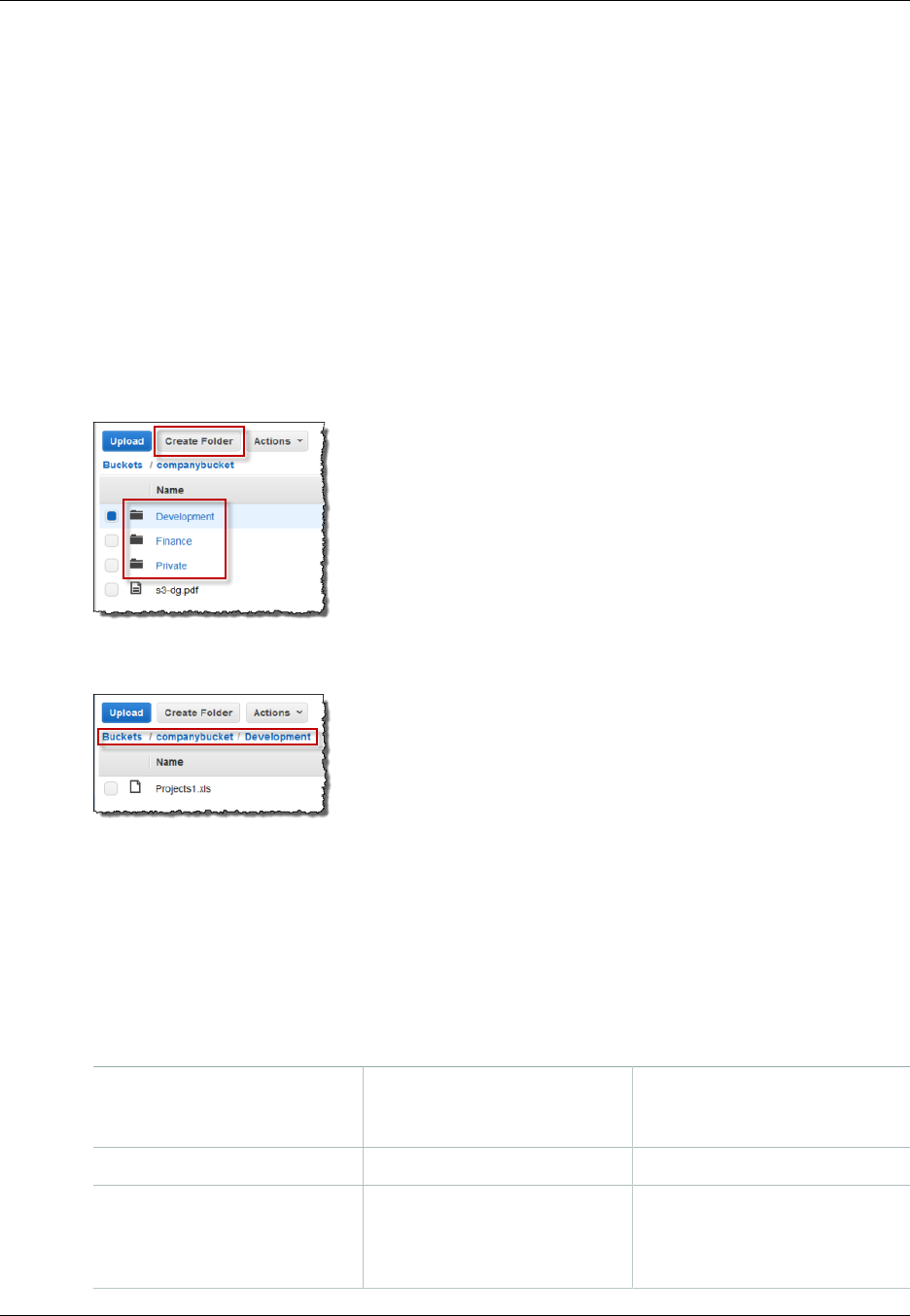

- Background: Basics of Buckets and Folders

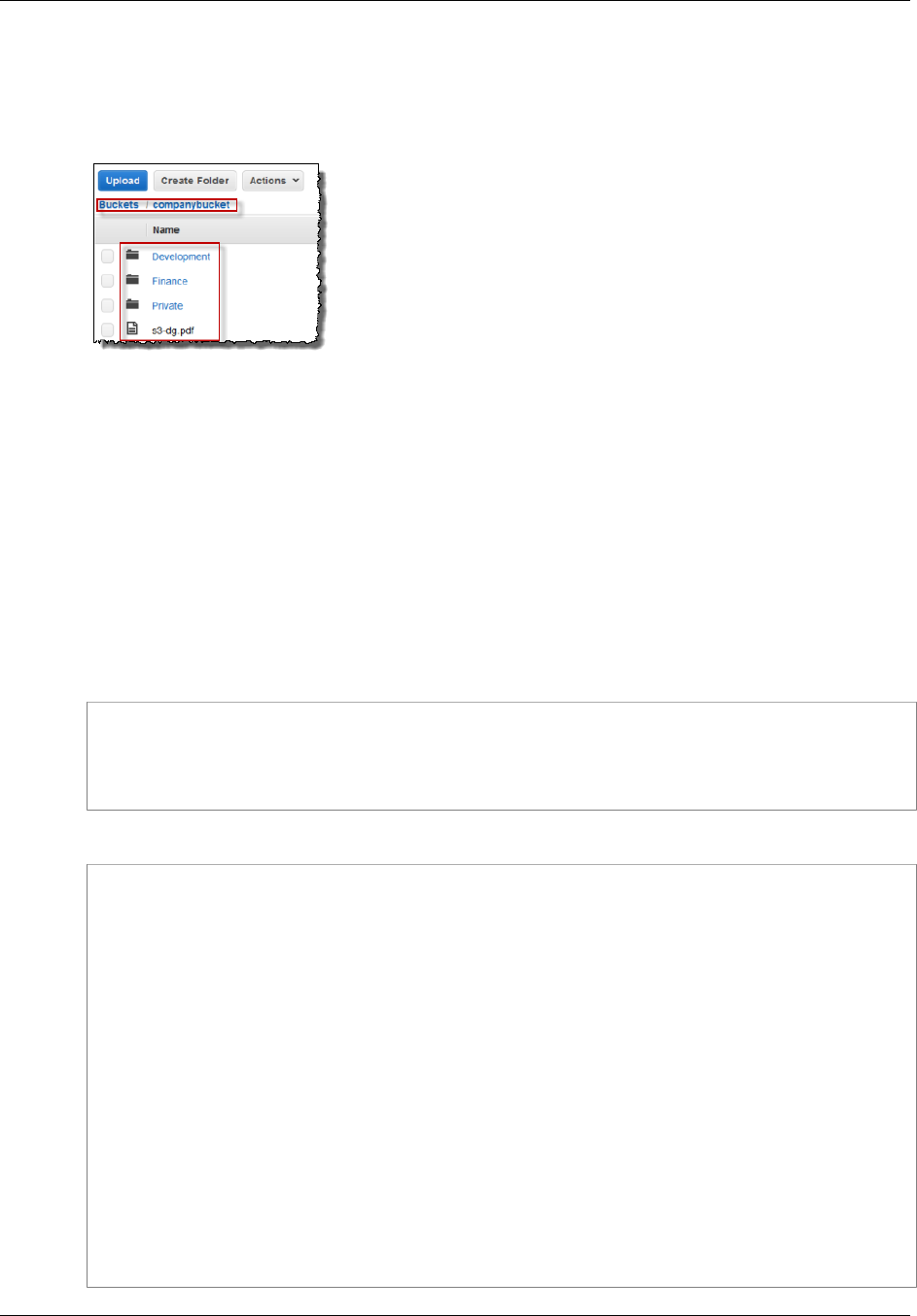

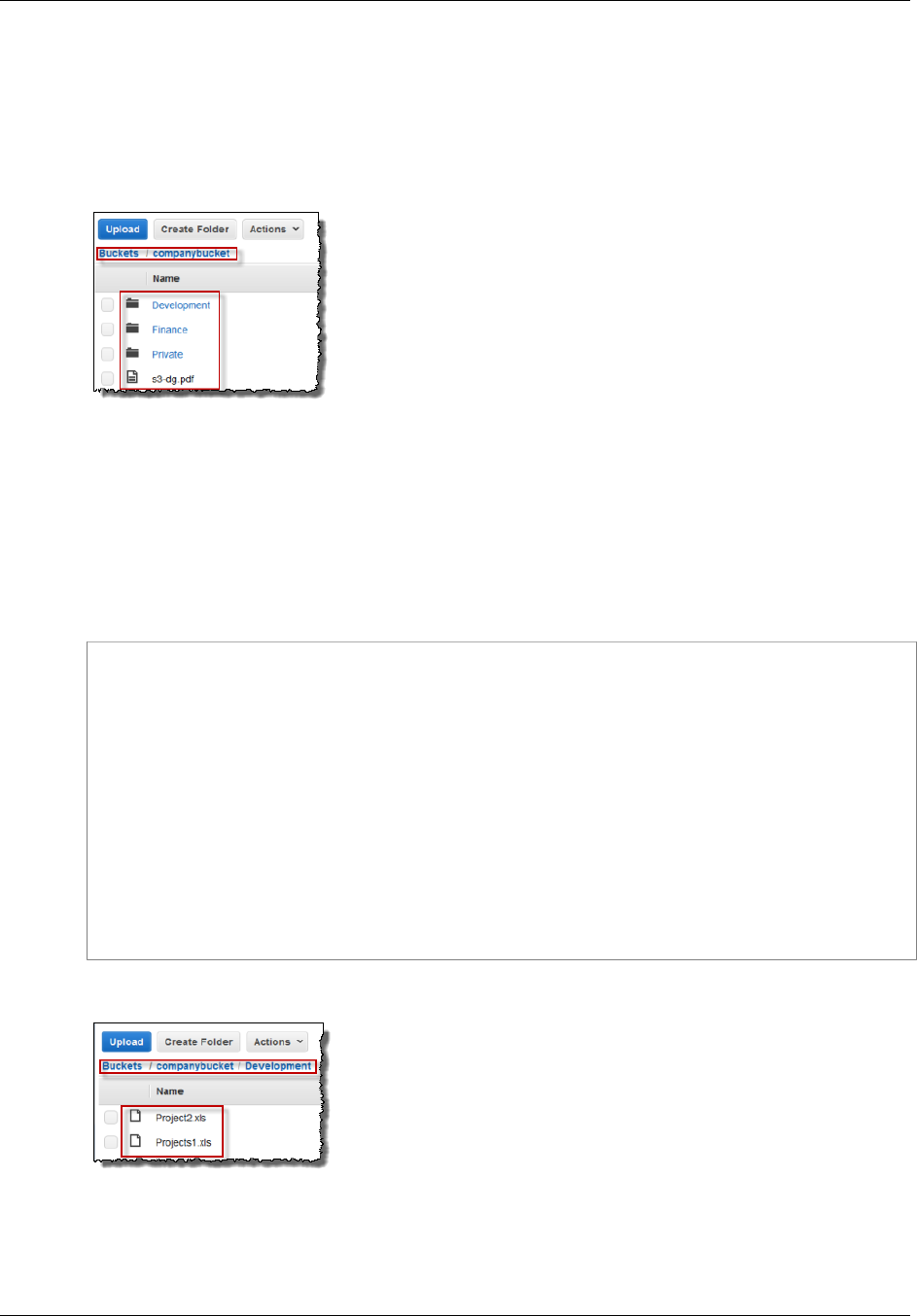

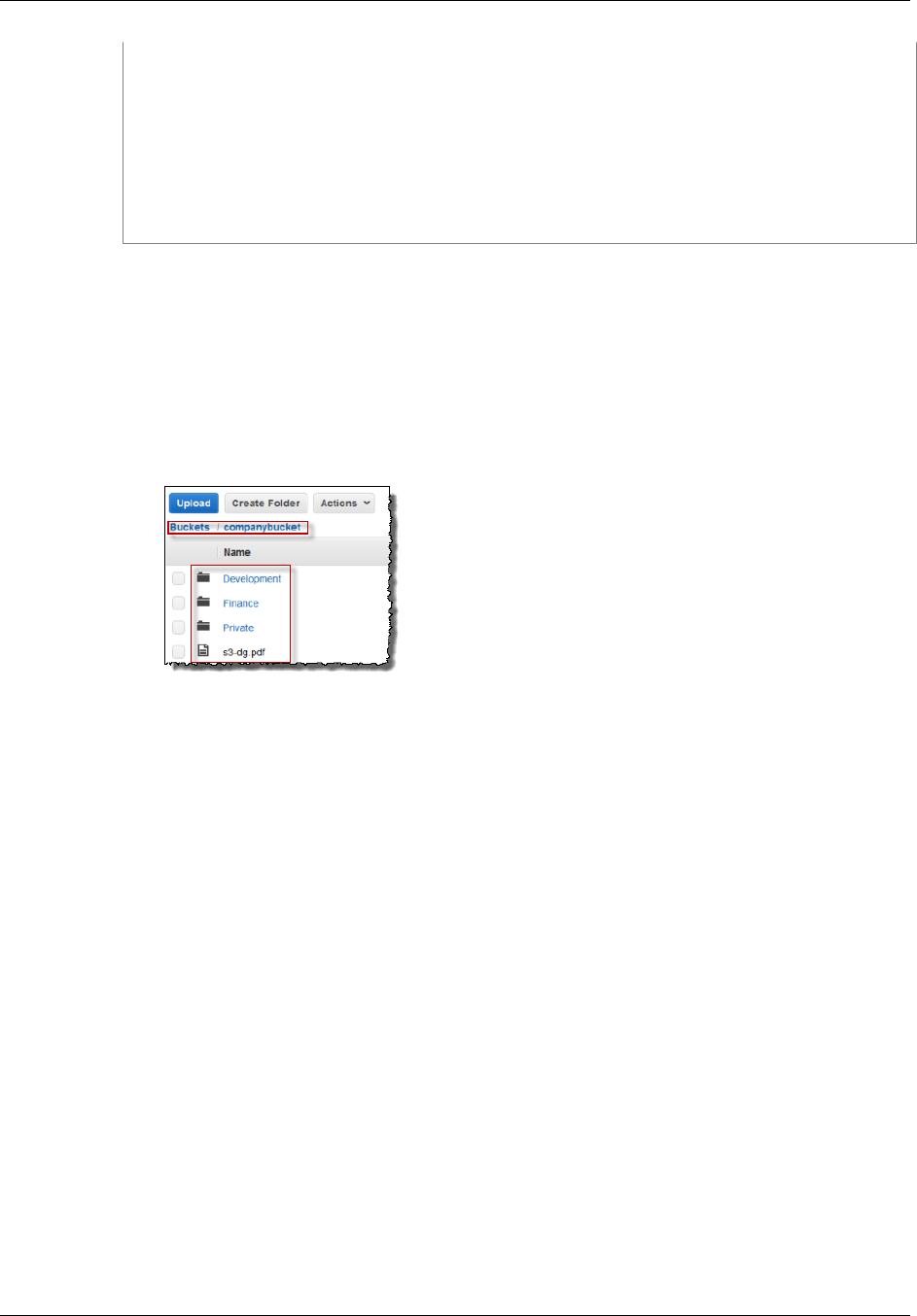

- Walkthrough Example

- Step 0: Preparing for the Walkthrough

- Step 1: Create a Bucket

- Step 2: Create IAM Users and a Group

- Step 3: Verify that IAM Users Have No Permissions

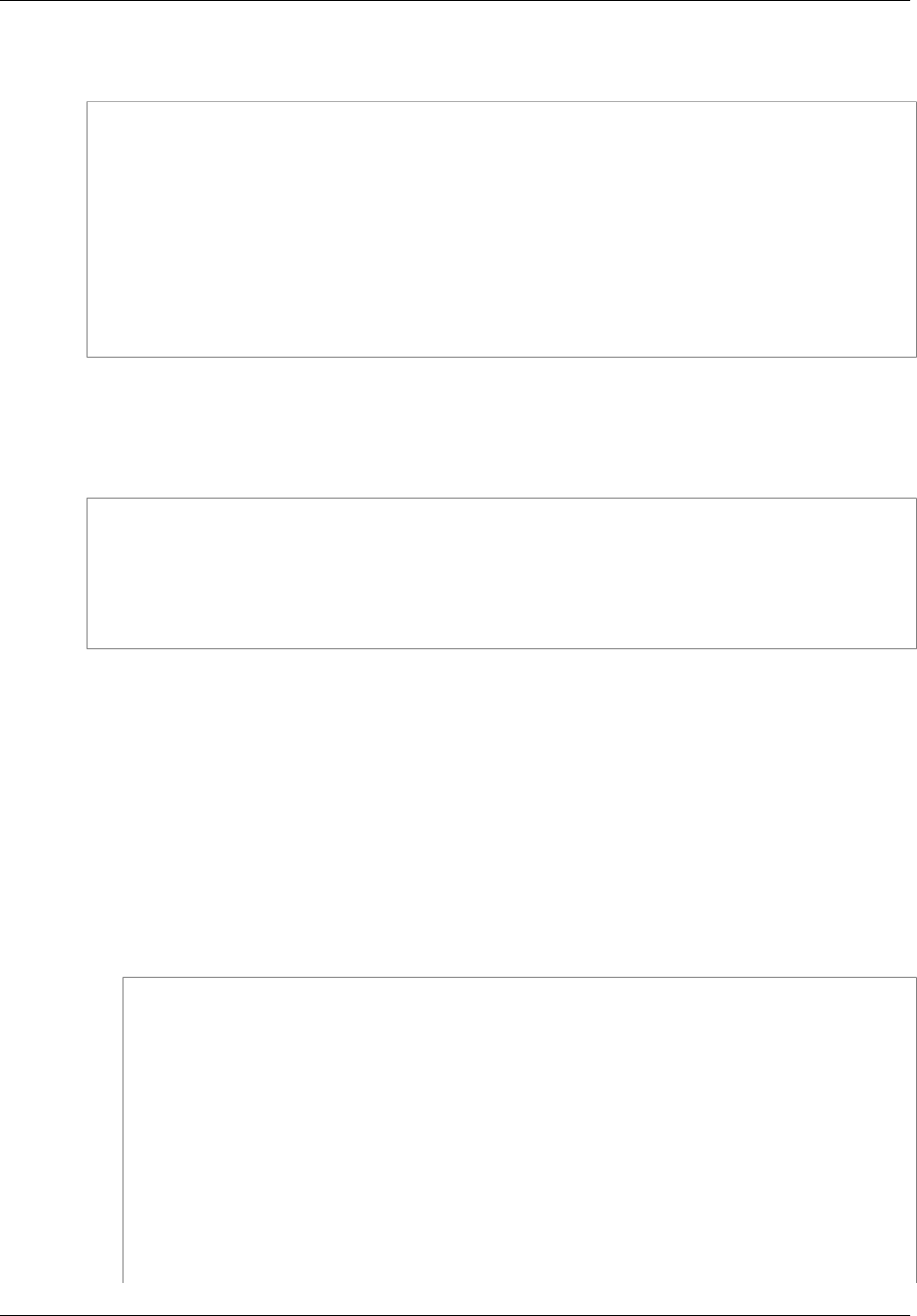

- Step 4: Grant Group-Level Permissions

- Step 5: Grant IAM User Alice Specific Permissions

- Step 6: Grant IAM User Bob Specific Permissions

- Step 7: Secure the Private Folder

- Cleanup

- Related Resources

- Access Policy Language Overview

- Managing Access with ACLs

- Introduction to Managing Access Permissions to Your Amazon S3 Resources

- Protecting Data in Amazon S3

- Protecting Data Using Encryption

- Protecting Data Using Server-Side Encryption

- Protecting Data Using Server-Side Encryption with AWS KMS–Managed Keys (SSE-KMS)

- Protecting Data Using Server-Side Encryption with Amazon S3-Managed Encryption Keys (SSE-S3)

- API Support for Server-Side Encryption

- Specifying Server-Side Encryption Using the AWS SDK for Java

- Specifying Server-Side Encryption Using the AWS SDK for .NET

- Specifying Server-Side Encryption Using the AWS SDK for PHP

- Specifying Server-Side Encryption Using the AWS SDK for Ruby

- Specifying Server-Side Encryption Using the REST API

- Specifying Server-Side Encryption Using the AWS Management Console

- Protecting Data Using Server-Side Encryption with Customer-Provided Encryption Keys (SSE-C)

- Using SSE-C

- Presigned URL and SSE-C

- Specifying Server-Side Encryption with Customer-Provided Encryption Keys Using the AWS Java SDK

- Specifying Server-Side Encryption with Customer-Provided Encryption Keys Using the .NET SDK

- Specifying Server-Side Encryption with Customer-Provided Encryption Keys Using the REST API

- Protecting Data Using Client-Side Encryption

- Option 1: Using an AWS KMS–Managed Customer Master Key (CMK)

- Option 2: Using a Client-Side Master Key

- Example: Client-Side Encryption (Option 1: Using an AWS KMS–Managed Customer Master Key (AWS SDK for Java))

- Examples: Client-Side Encryption (Option 2: Using a Client-Side Master Key (AWS SDK for Java))

- Protecting Data Using Server-Side Encryption

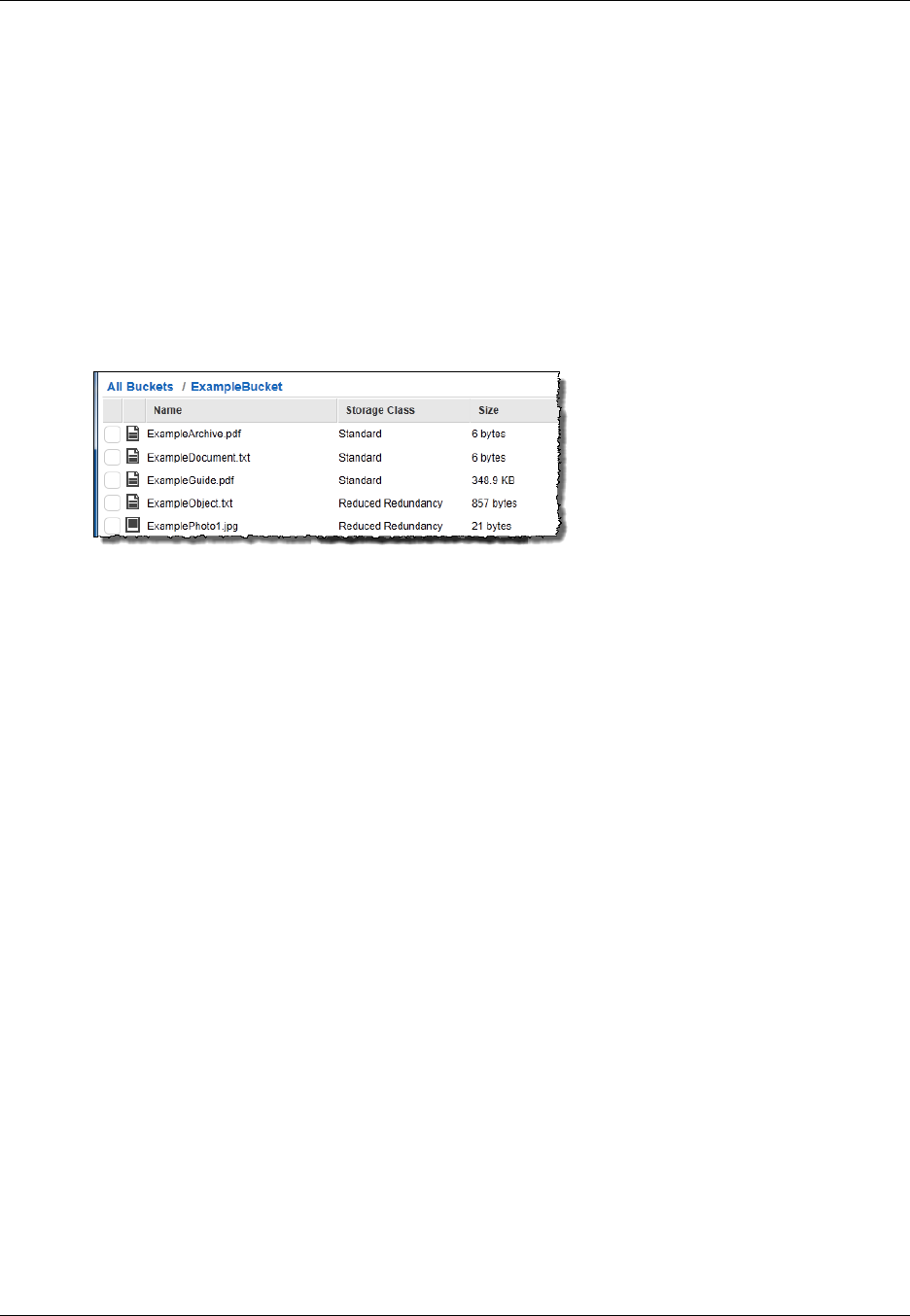

- Using Reduced Redundancy Storage

- Using Versioning

- How to Configure Versioning on a Bucket

- MFA Delete

- Related Topics

- Examples of Enabling Bucket Versioning

- Managing Objects in a Versioning-Enabled Bucket

- Managing Objects in a Versioning-Suspended Bucket

- Protecting Data Using Encryption

- Hosting a Static Website on Amazon S3

- Website Endpoints

- Configure a Bucket for Website Hosting

- Example Walkthroughs - Hosting Websites On Amazon S3

- Example: Setting Up a Static Website

- Example: Setting Up a Static Website Using a Custom Domain

- Configuring Amazon S3 Event Notifications

- Overview

- How to Enable Event Notifications

- Event Notification Types and Destinations

- Configuring Notifications with Object Key Name Filtering

- Granting Permissions to Publish Event Notification Messages to a Destination

- Example Walkthrough 1: Configure a Bucket for Notifications (Message Destination: SNS Topic and SQS Queue)

- Example Walkthrough 2: Configure a Bucket for Notifications (Message Destination: AWS Lambda)

- Event Message Structure

- Cross-Region Replication

- Use-case Scenarios

- Requirements

- Related Topics

- What Is and Is Not Replicated

- How to Set Up Cross-Region Replication

- Create an IAM Role

- Add Replication Configuration

- Walkthrough 1: Configure Cross-Region Replication Where Source and Destination Buckets Are Owned by the Same AWS Account

- Walkthrough 2: Configure Cross-Region Replication Where Source and Destination Buckets Are Owned by Different AWS Accounts

- How to Set Up Cross-Region Replication Using the Console

- How to Set Up Cross-Region Replication Using the AWS SDK for Java

- How to Set Up Cross-Region Replication Using the AWS SDK for .NET

- How to Find Replication Status of an Object

- Troubleshooting Cross-Region Replication in Amazon S3

- Cross-Region Replication and Other Bucket Configurations

- Request Routing

- Performance Optimization

- Monitoring Amazon S3 with Amazon CloudWatch

- Logging Amazon S3 API Calls By Using AWS CloudTrail

- Using BitTorrent with Amazon S3

- Using Amazon DevPay with Amazon S3

- Handling REST and SOAP Errors

- Troubleshooting Amazon S3

- Server Access Logging

- Using the AWS SDKs, CLI, and Explorers

- Appendices

- Appendix A: Using the SOAP API

- Appendix B: Authenticating Requests (AWS Signature Version 2)

- Authenticating Requests Using the REST API

- Signing and Authenticating REST Requests

- Using Temporary Security Credentials

- The Authentication Header

- Request Canonicalization for Signing

- Constructing the CanonicalizedResource Element

- Constructing the CanonicalizedAmzHeaders Element

- Positional versus Named HTTP Header StringToSign Elements

- Time Stamp Requirement

- Authentication Examples

- REST Request Signing Problems

- Query String Request Authentication Alternative

- Browser-Based Uploads Using POST (AWS Signature Version 2)

- Amazon S3 Resources

- Document History

- AWS Glossary

Amazon Simple

Storage Service

Developer Guide

API Version 2006-03-01

Amazon Simple Storage Service Developer Guide

Amazon Simple Storage Service Developer Guide

Amazon Simple Storage Service: Developer Guide

Copyright © 2016 Amazon Web Services, Inc. and/or its affiliates. All rights reserved.

Amazon's trademarks and trade dress may not be used in connection with any product or service that is not Amazon's, in any

manner that is likely to cause confusion among customers, or in any manner that disparages or discredits Amazon. All other

trademarks not owned by Amazon are the property of their respective owners, who may or may not be affiliated with, connected to,

or sponsored by Amazon.

Amazon Simple Storage Service Developer Guide

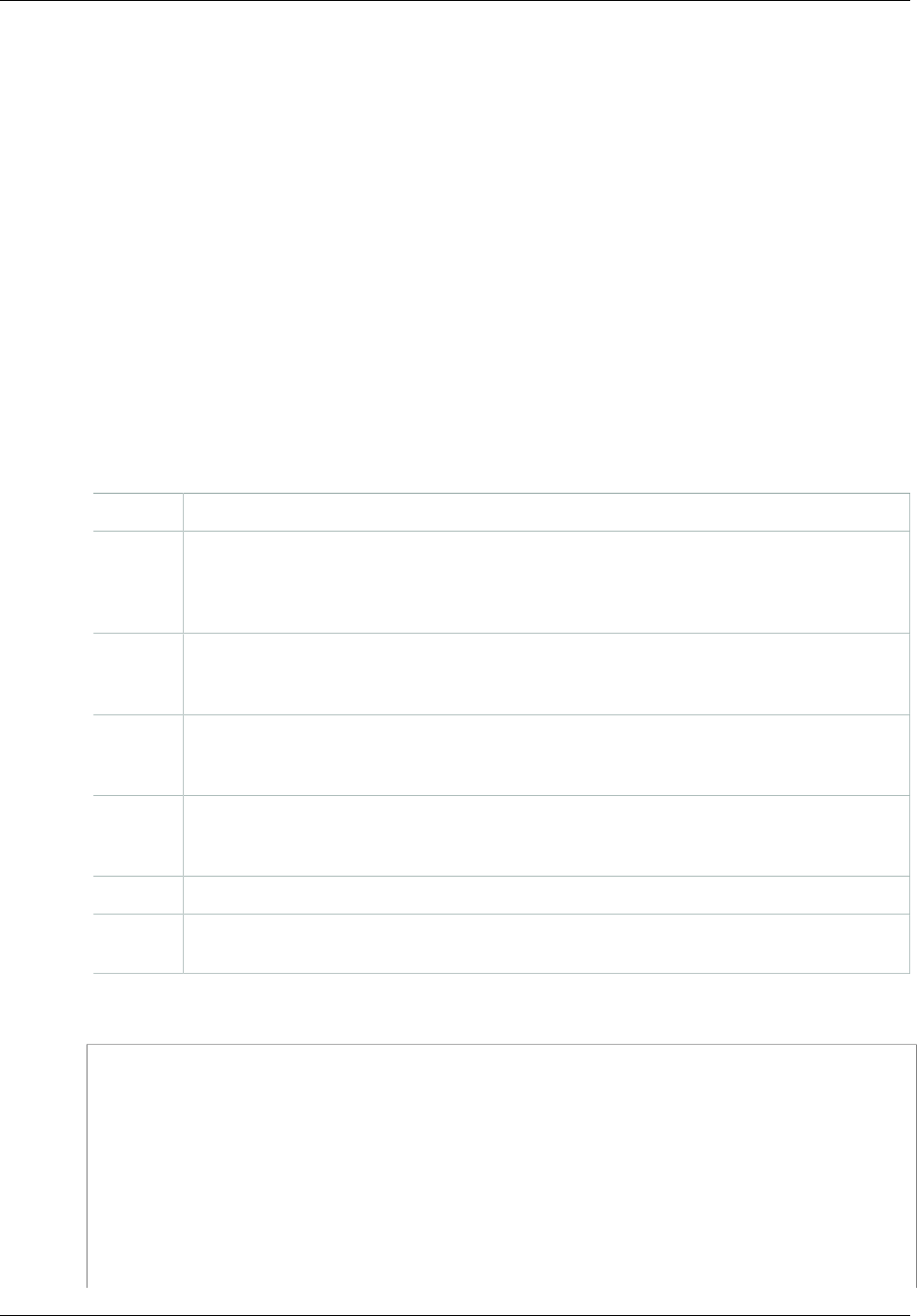

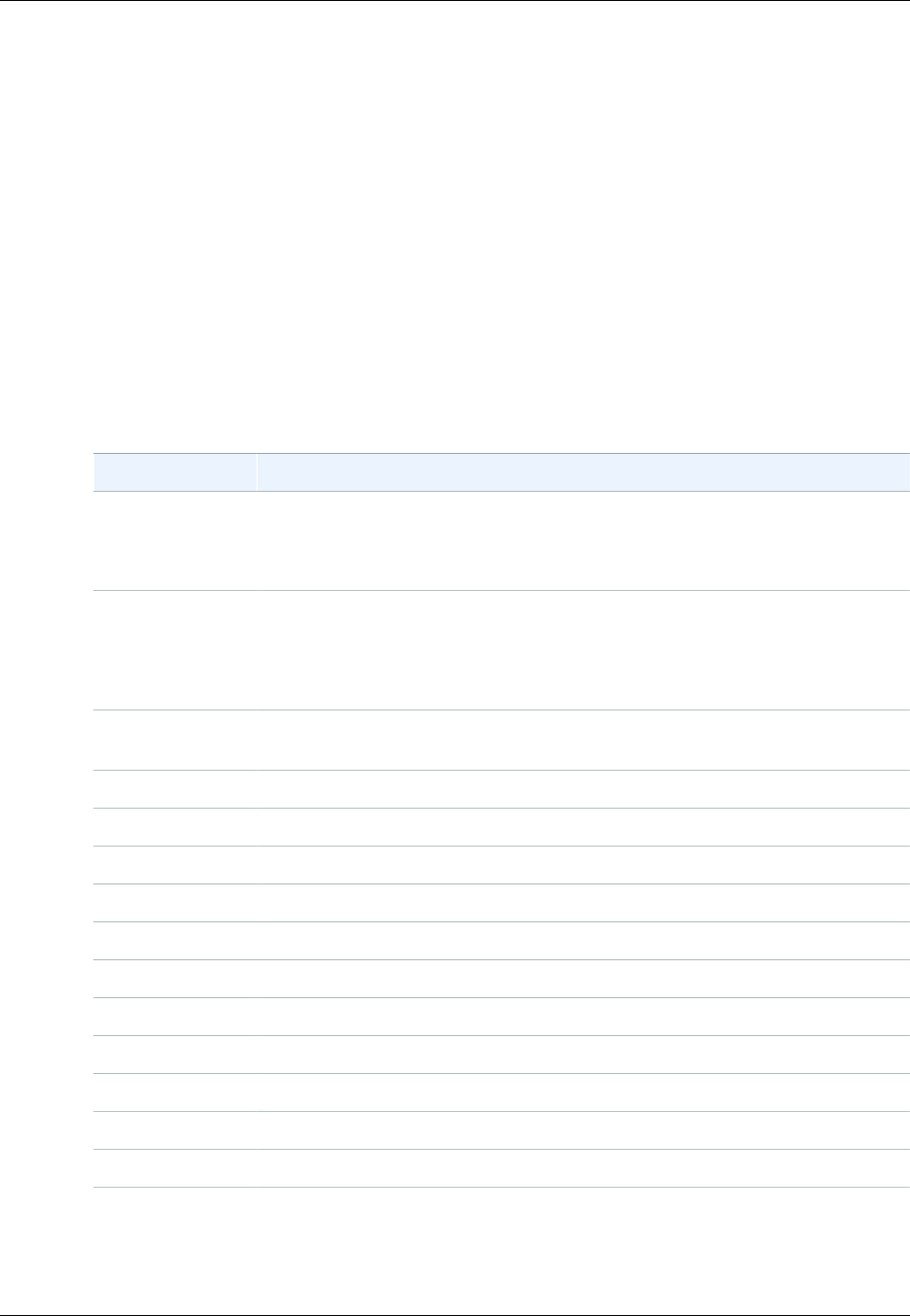

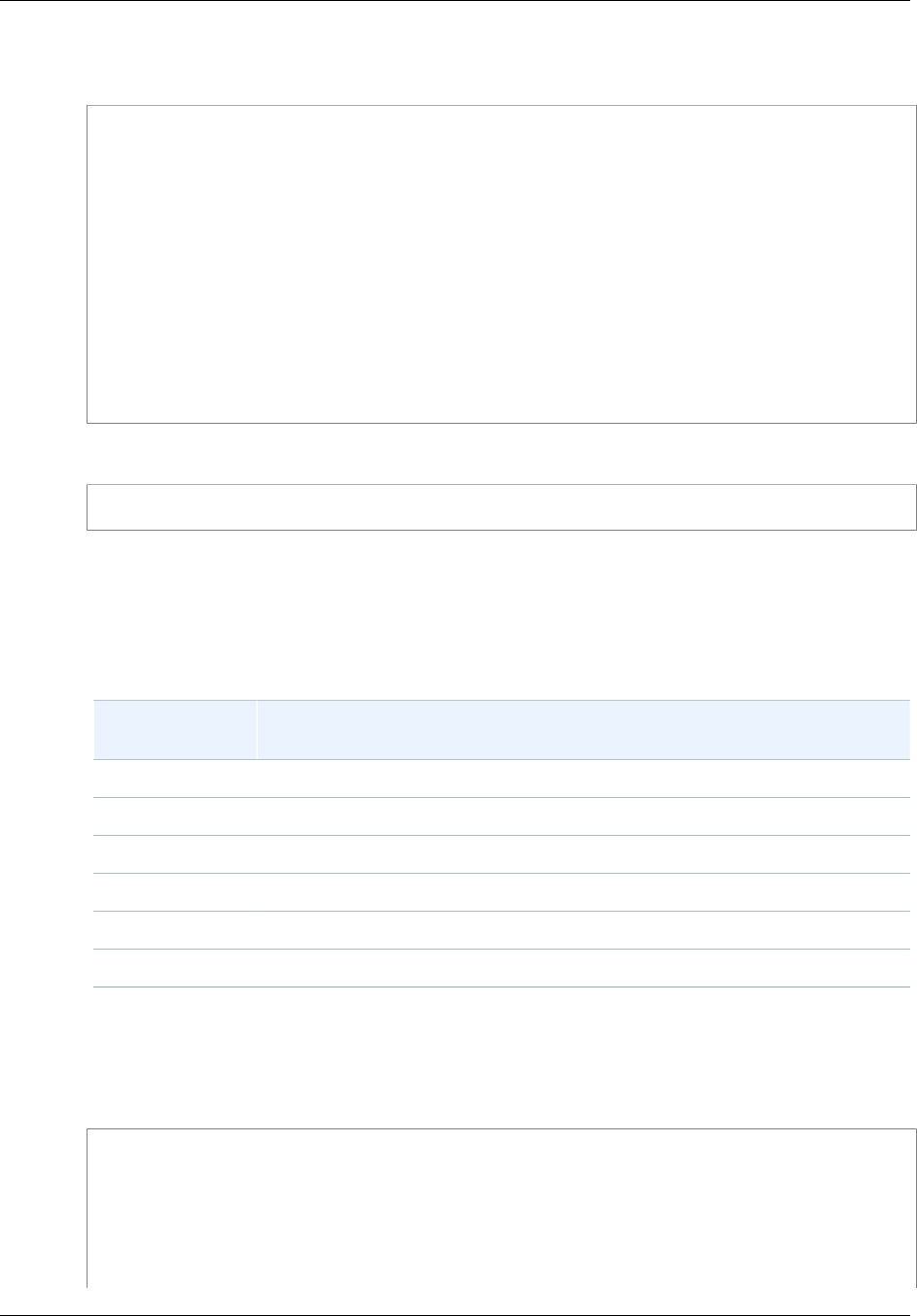

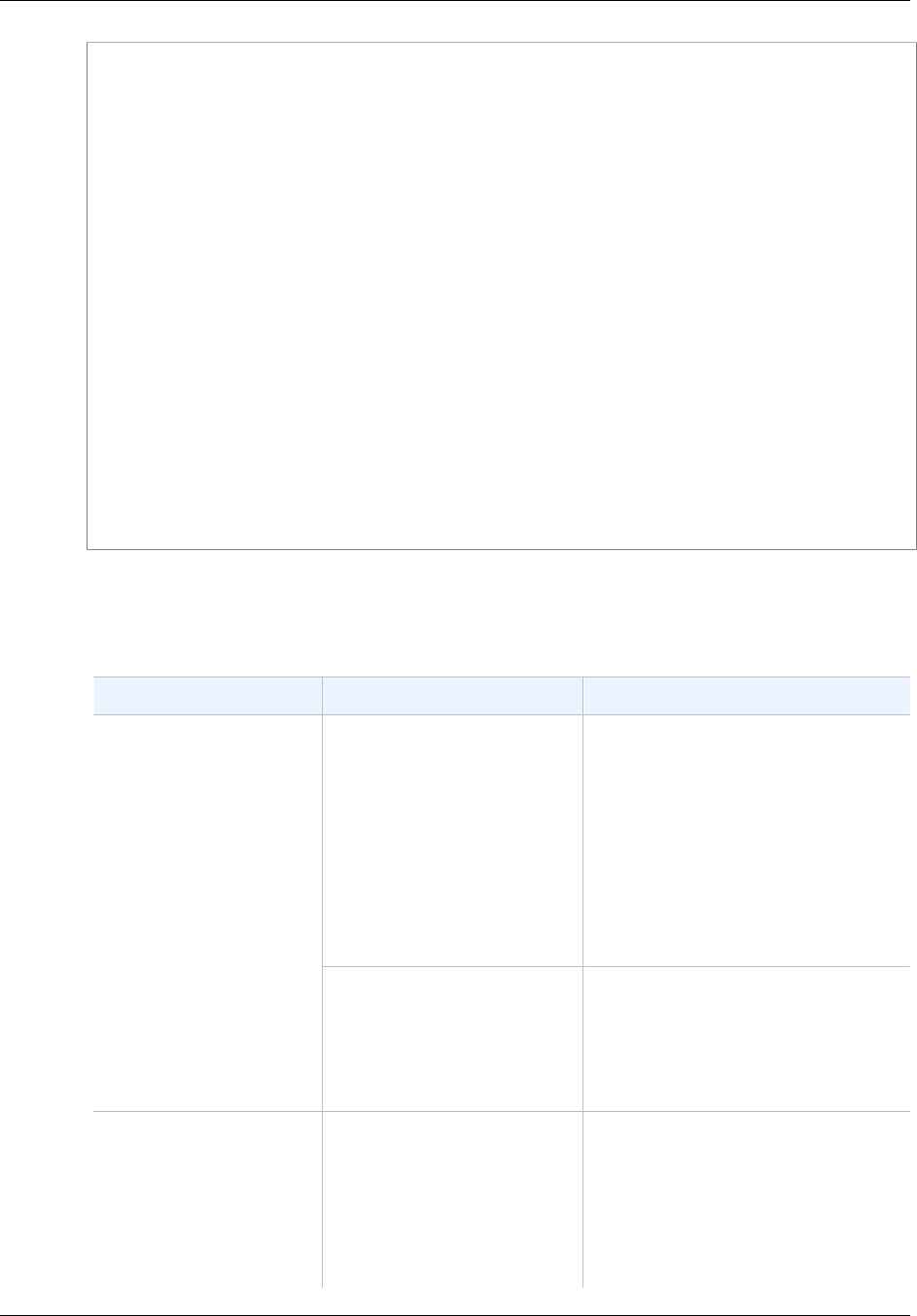

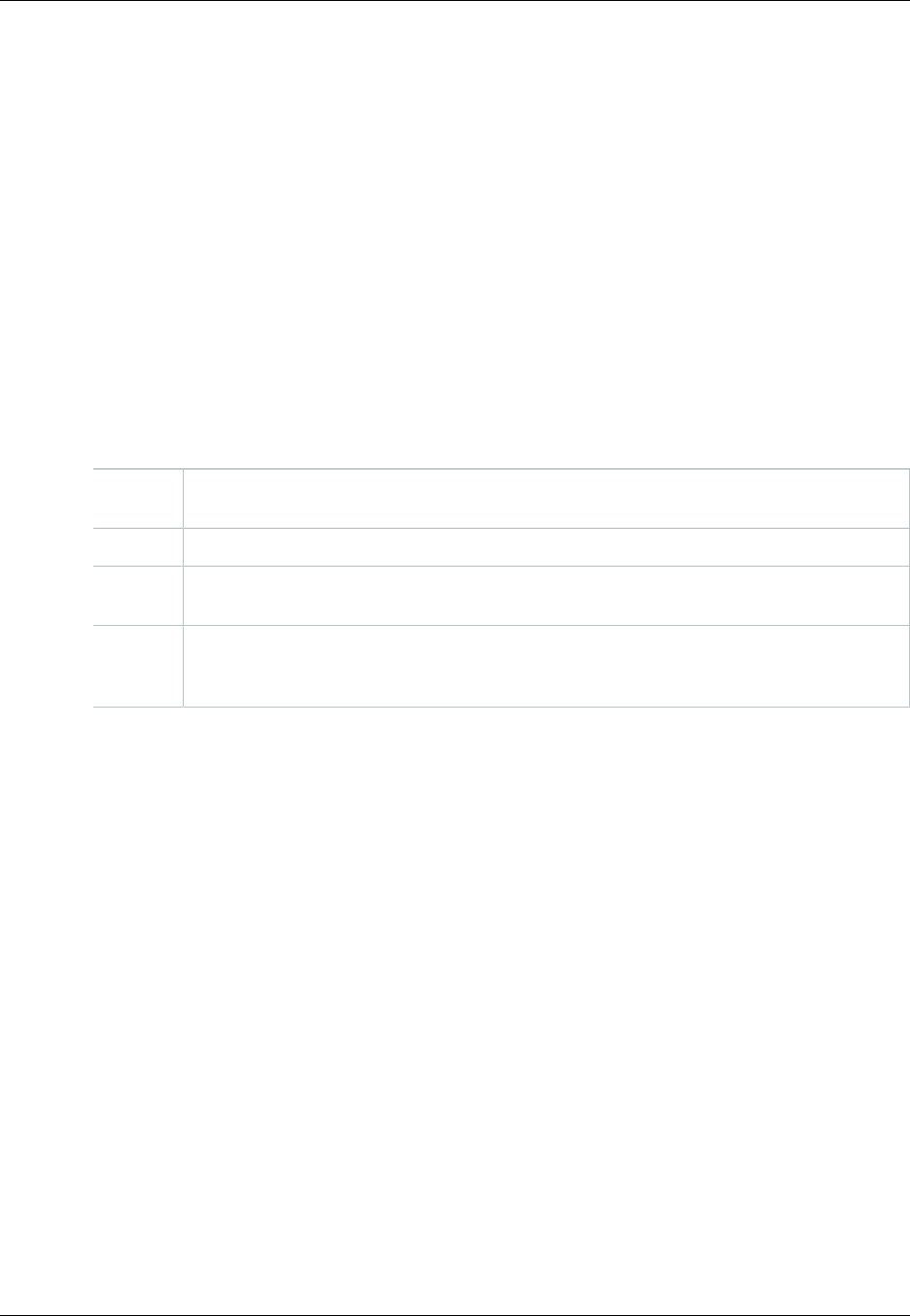

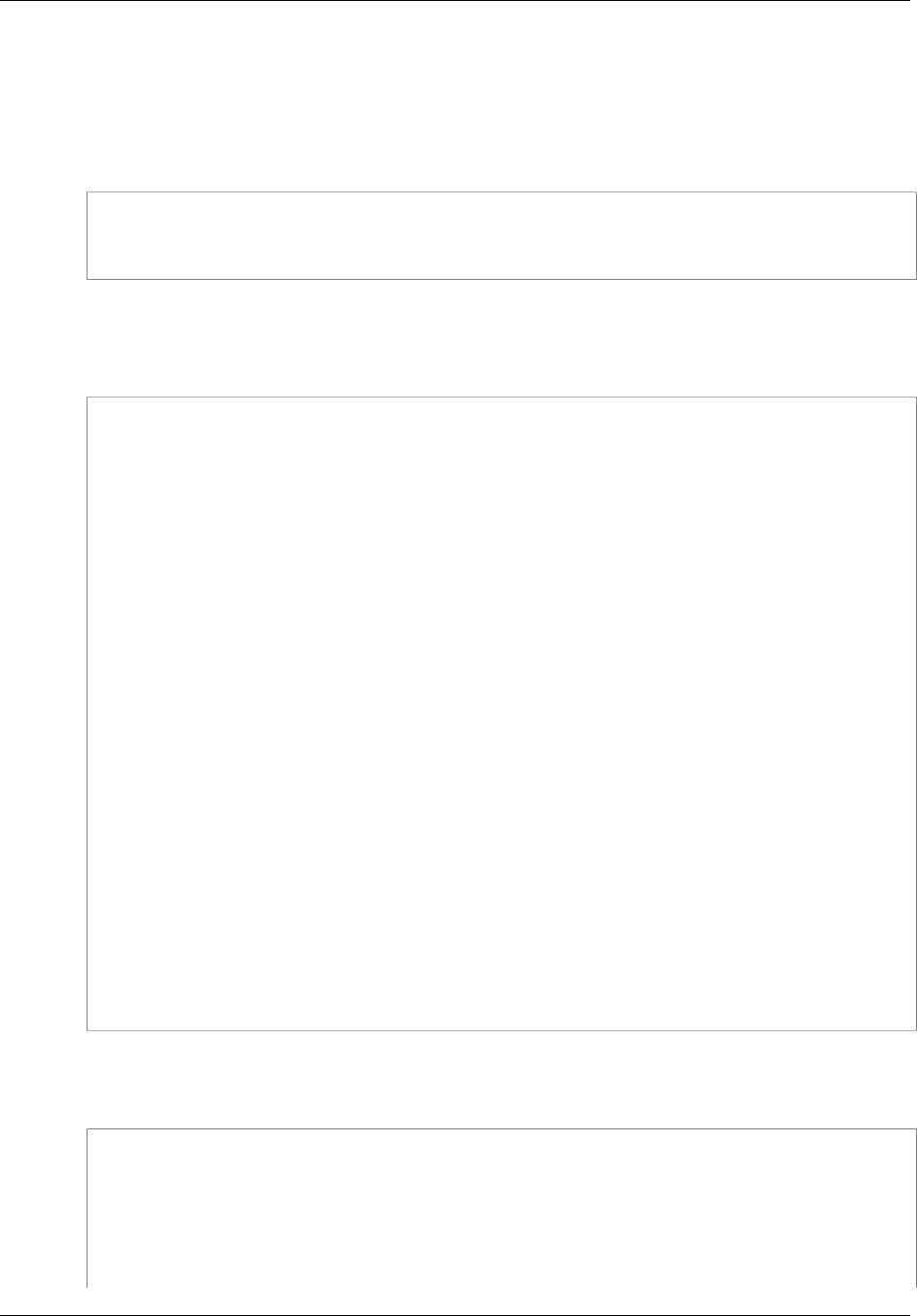

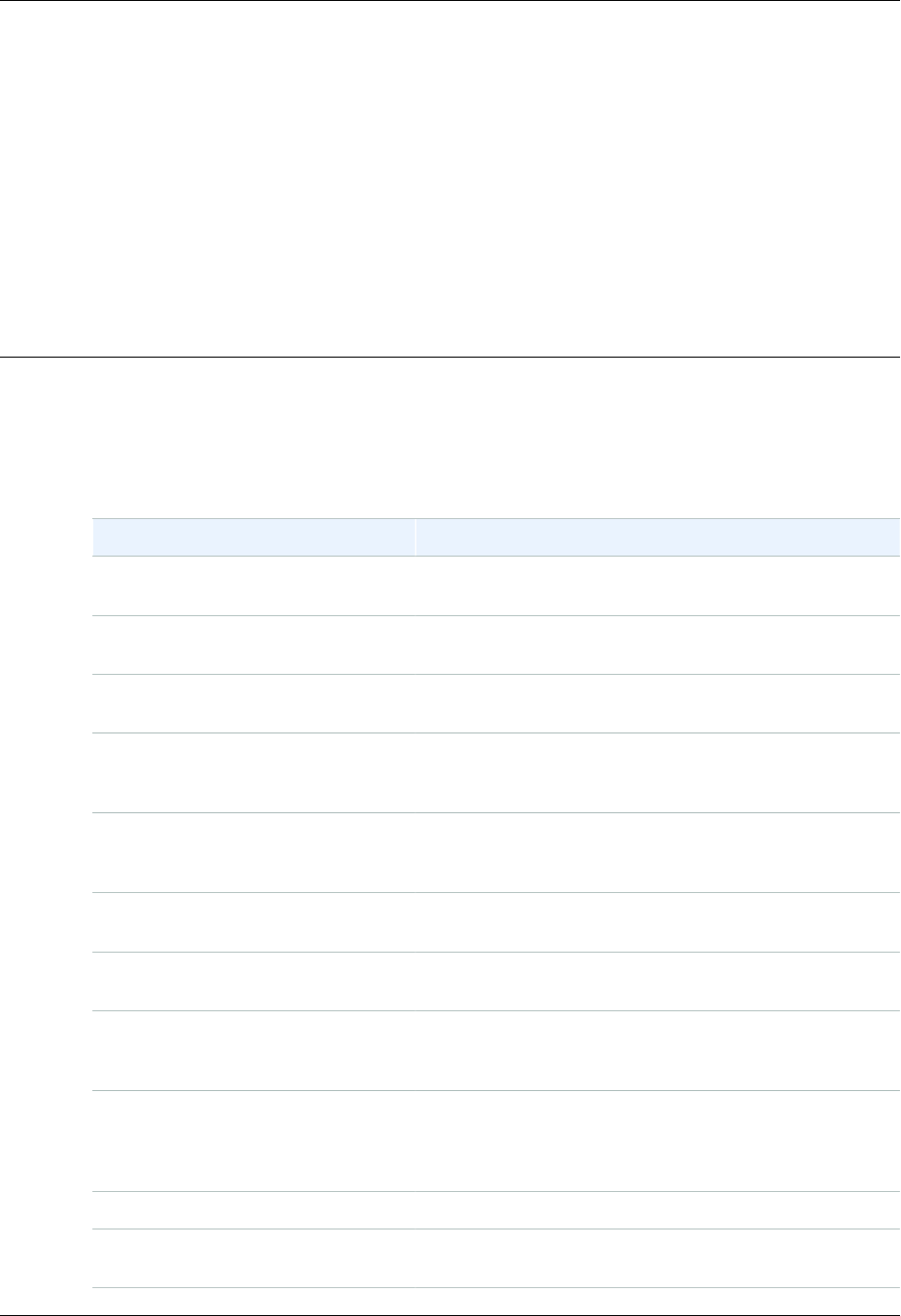

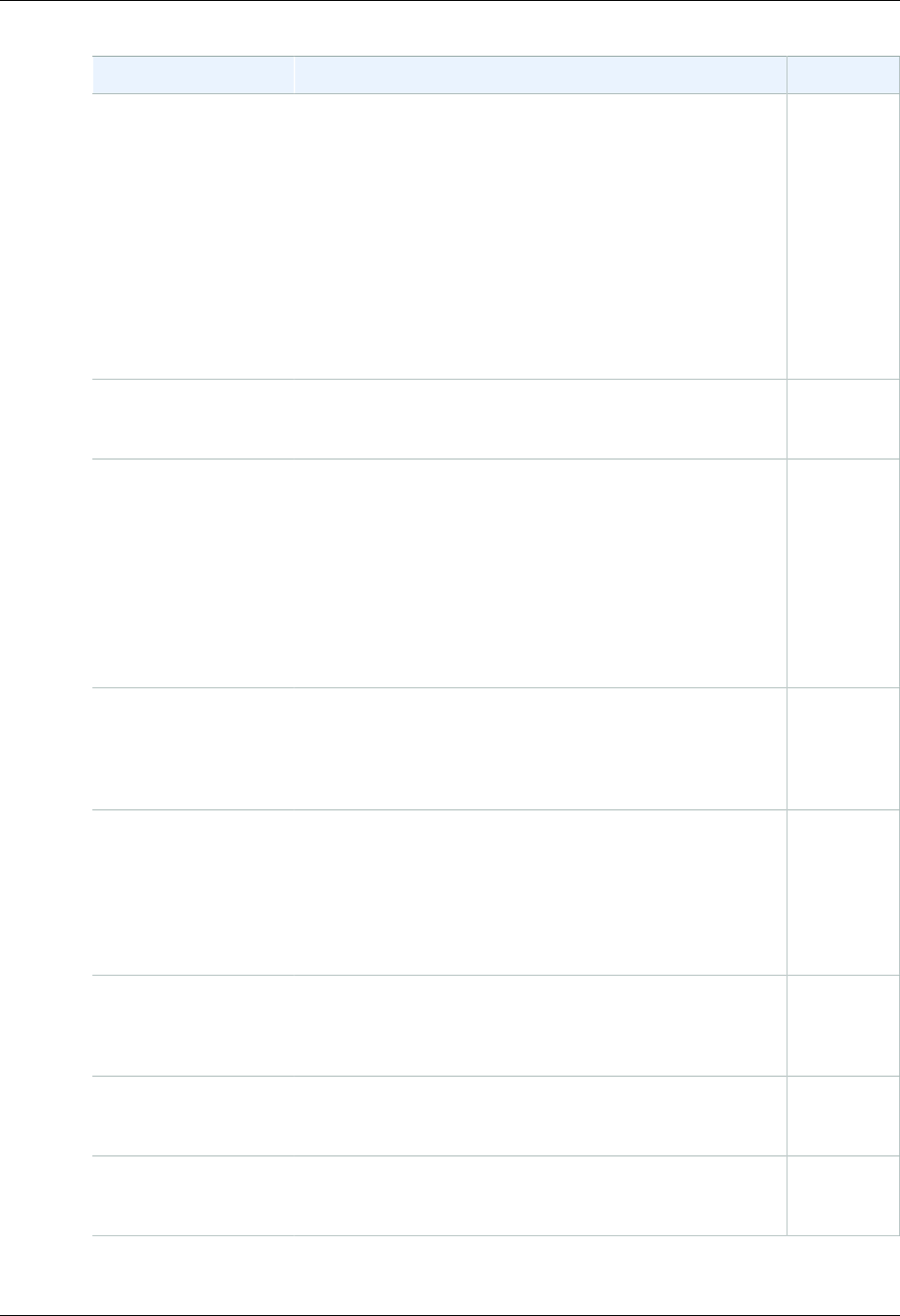

Table of Contents

What Is Amazon S3? .................................................................................................................... 1

How Do I...? ........................................................................................................................ 1

Introduction .................................................................................................................................. 2

Overview of Amazon S3 and This Guide .................................................................................. 2

Advantages to Amazon S3 ..................................................................................................... 2

Amazon S3 Concepts ............................................................................................................ 3

Buckets ....................................................................................................................... 3

Objects ........................................................................................................................ 3

Keys ........................................................................................................................... 4

Regions ....................................................................................................................... 4

Amazon S3 Data Consistency Model ............................................................................... 4

Features .............................................................................................................................. 6

Reduced Redundancy Storage ........................................................................................ 6

Bucket Policies ............................................................................................................. 7

AWS Identity and Access Management ............................................................................ 8

Access Control Lists ...................................................................................................... 8

Versioning .................................................................................................................... 8

Operations ................................................................................................................... 8

Amazon S3 Application Programming Interfaces (API) ................................................................ 8

The REST Interface ...................................................................................................... 9

The SOAP Interface ...................................................................................................... 9

Paying for Amazon S3 ........................................................................................................... 9

Related Services ................................................................................................................... 9

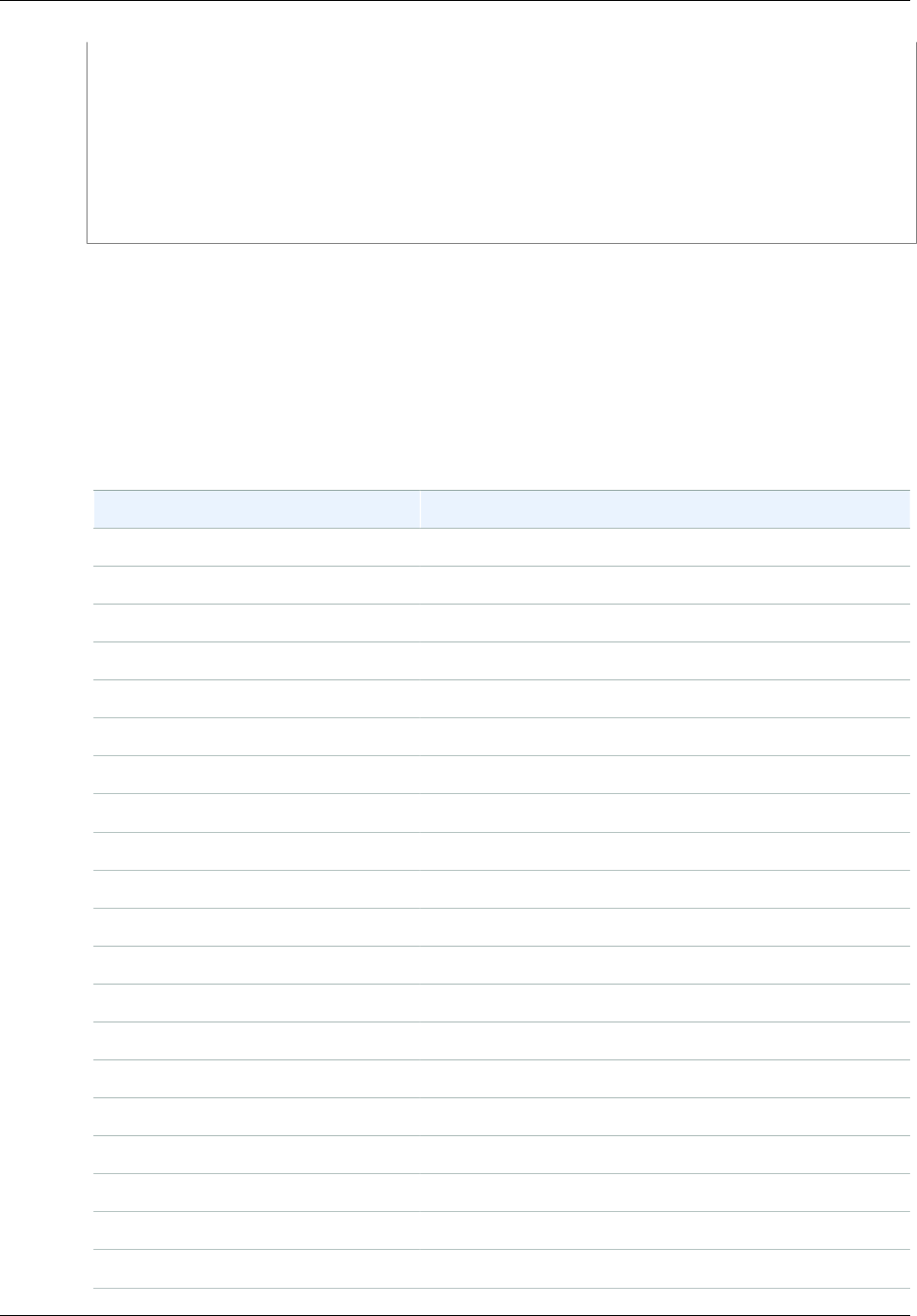

Making Requests ........................................................................................................................ 11

About Access Keys ............................................................................................................. 11

AWS Account Access Keys .......................................................................................... 11

IAM User Access Keys ................................................................................................ 12

Temporary Security Credentials ..................................................................................... 12

Request Endpoints .............................................................................................................. 13

Making Requests over IPv6 .................................................................................................. 13

Getting Started with IPv6 .............................................................................................. 13

Using IPv6 Addresses in IAM Policies ............................................................................ 14

Testing IP Address Compatibility ................................................................................... 15

Using Dual-Stack Endpoints .......................................................................................... 16

Making Requests Using the AWS SDKs ................................................................................. 19

Using AWS Account or IAM User Credentials .................................................................. 20

Using IAM User Temporary Credentials .......................................................................... 25

Using Federated User Temporary Credentials ................................................................. 36

Making Requests Using the REST API ................................................................................... 49

Dual-Stack Endpoints (REST API) ................................................................................. 50

Virtual Hosting of Buckets ............................................................................................ 50

Request Redirection and the REST API ......................................................................... 55

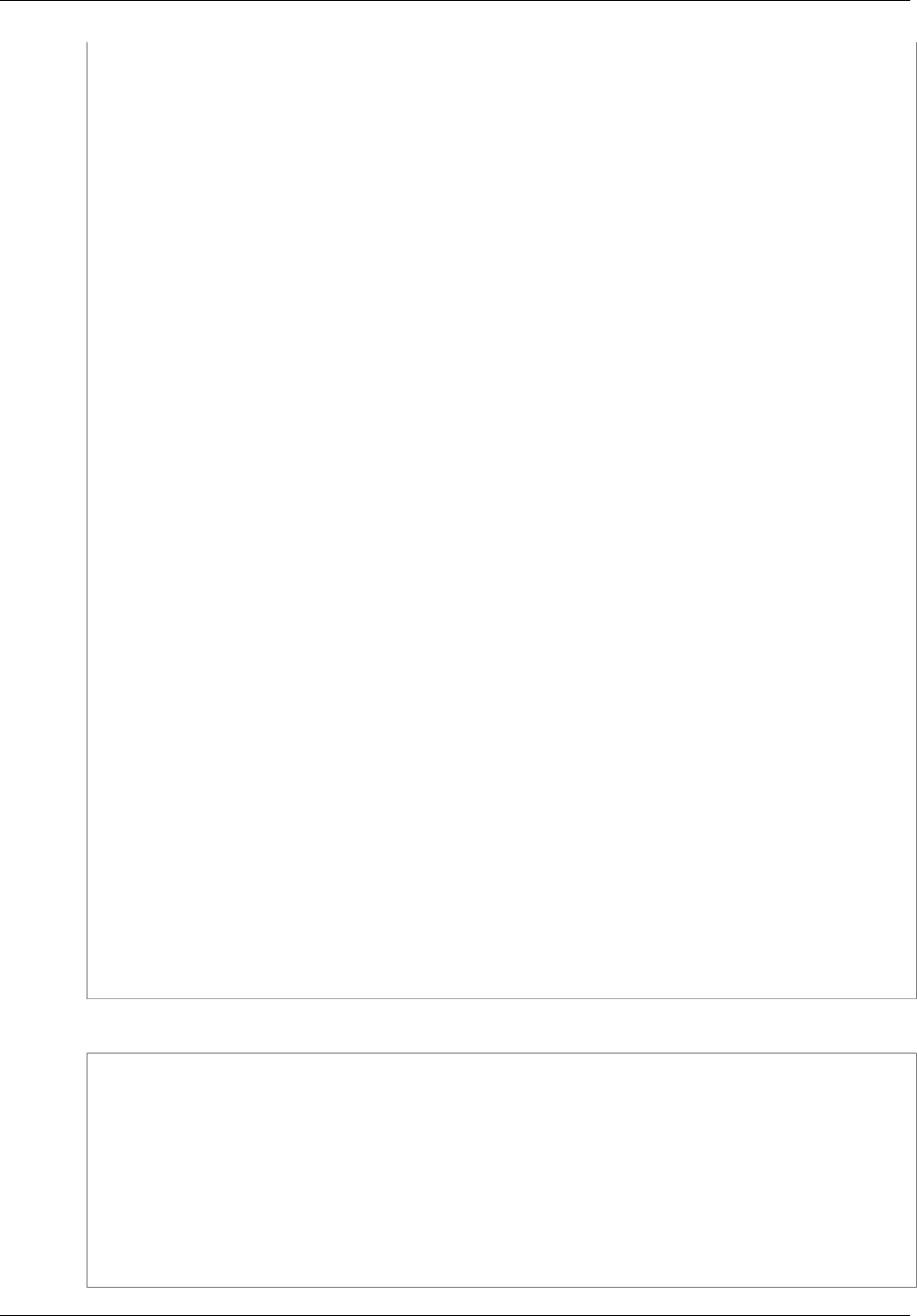

Buckets ..................................................................................................................................... 58

Creating a Bucket ............................................................................................................... 59

About Permissions ...................................................................................................... 60

Accessing a Bucket ............................................................................................................. 60

Bucket Configuration Options ................................................................................................ 61

Restrictions and Limitations .................................................................................................. 62

Rules for Naming ........................................................................................................ 63

Examples of Creating a Bucket ............................................................................................. 64

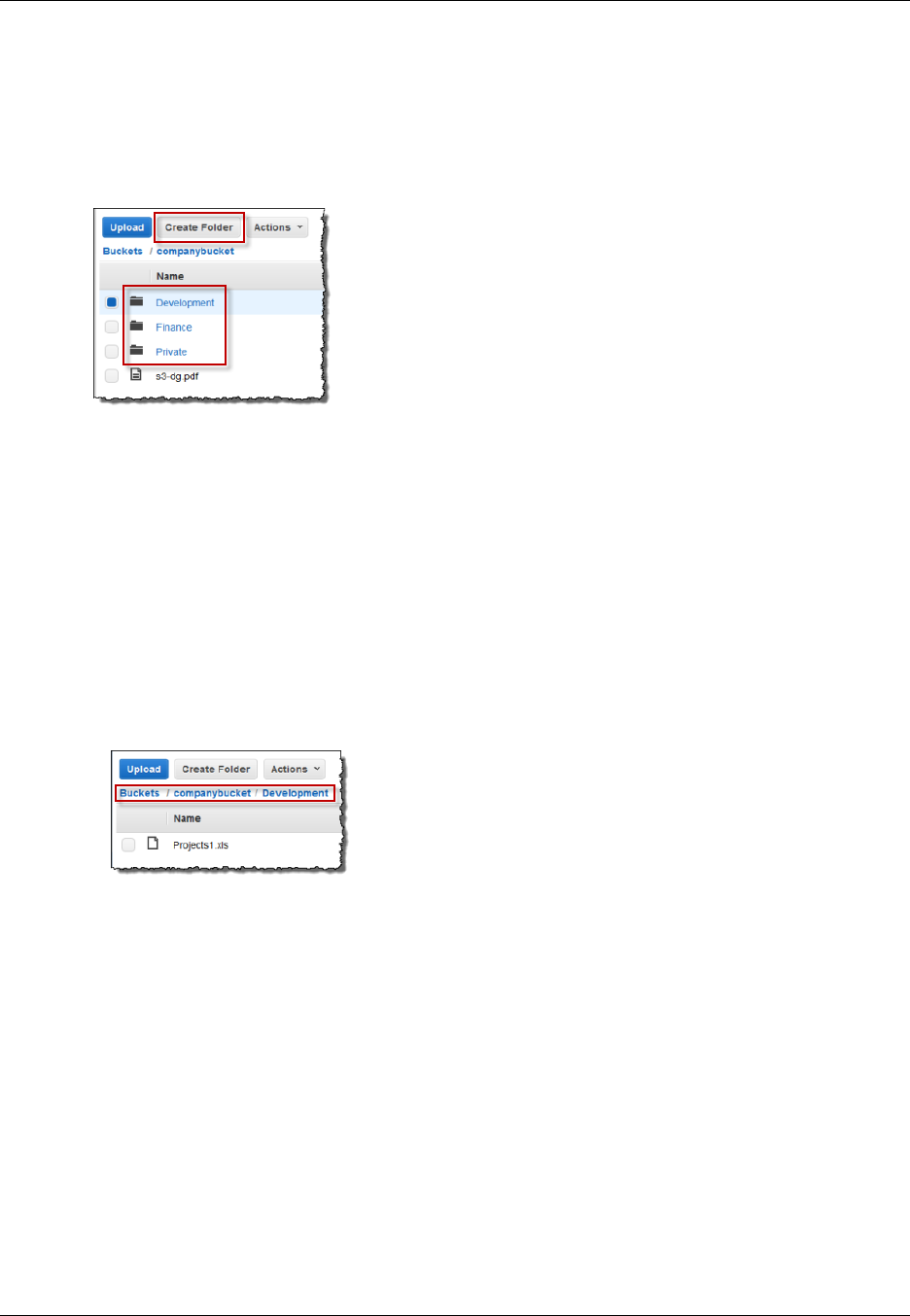

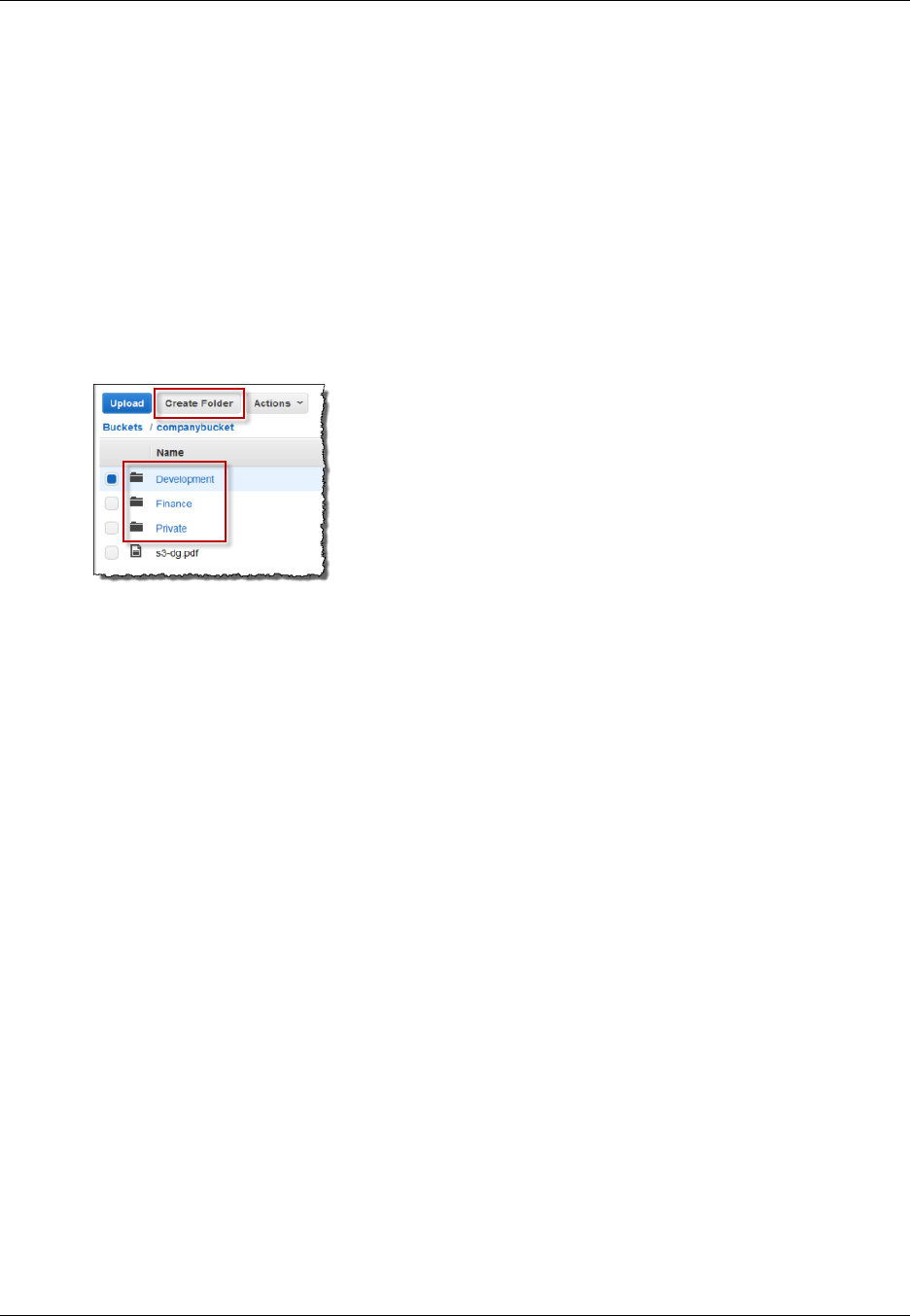

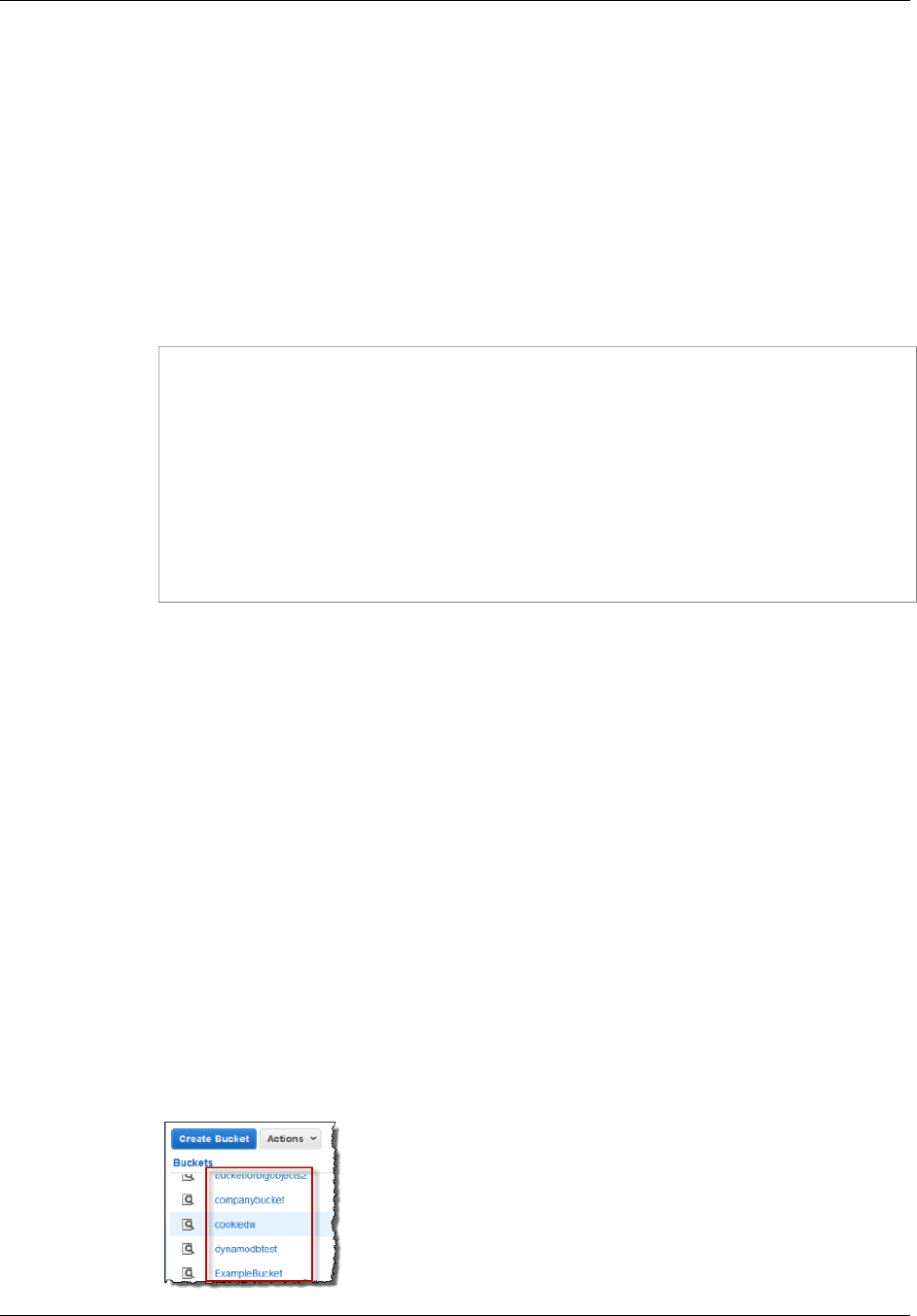

Using the Amazon S3 Console ..................................................................................... 65

Using the AWS SDK for Java ....................................................................................... 65

Using the AWS SDK for .NET ....................................................................................... 66

Using the AWS SDK for Ruby Version 2 ......................................................................... 67

Using Other AWS SDKs ............................................................................................... 67

API Version 2006-03-01

iv

Amazon Simple Storage Service Developer Guide

Deleting or Emptying a Bucket .............................................................................................. 67

Delete a Bucket .......................................................................................................... 68

Empty a Bucket .......................................................................................................... 71

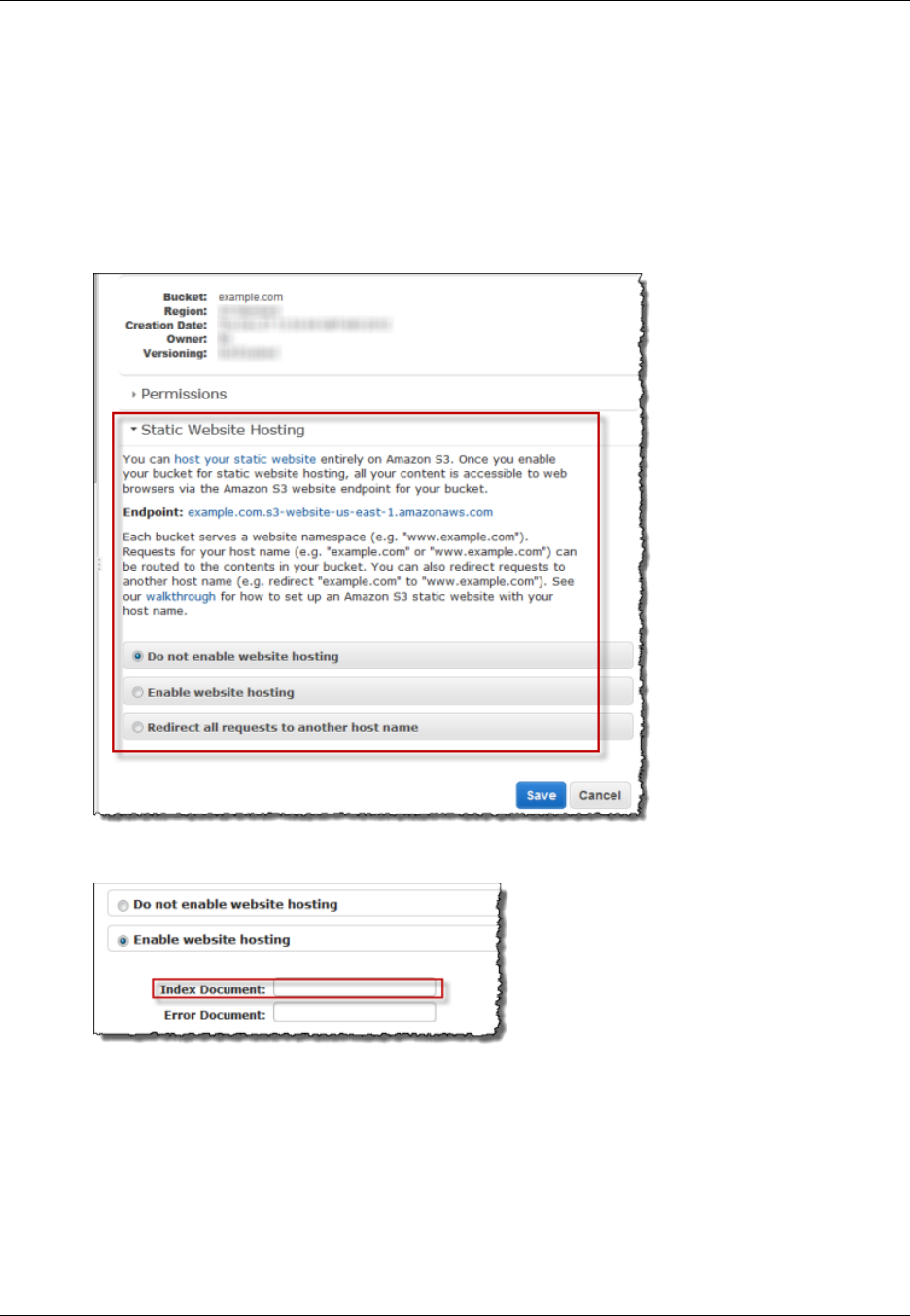

Bucket Website Configuration ............................................................................................... 73

Using the AWS Management Console ............................................................................ 73

Using the SDK for Java ............................................................................................... 73

Using the AWS SDK for .NET ....................................................................................... 76

Using the SDK for PHP ............................................................................................... 79

Using the REST API .................................................................................................... 81

Transfer Acceleration ........................................................................................................... 81

Why use Transfer Acceleration? .................................................................................... 81

Getting Started ........................................................................................................... 82

Requirements for Using Amazon S3 Transfer Acceleration ................................................. 83

Transfer Acceleration Examples .................................................................................... 83

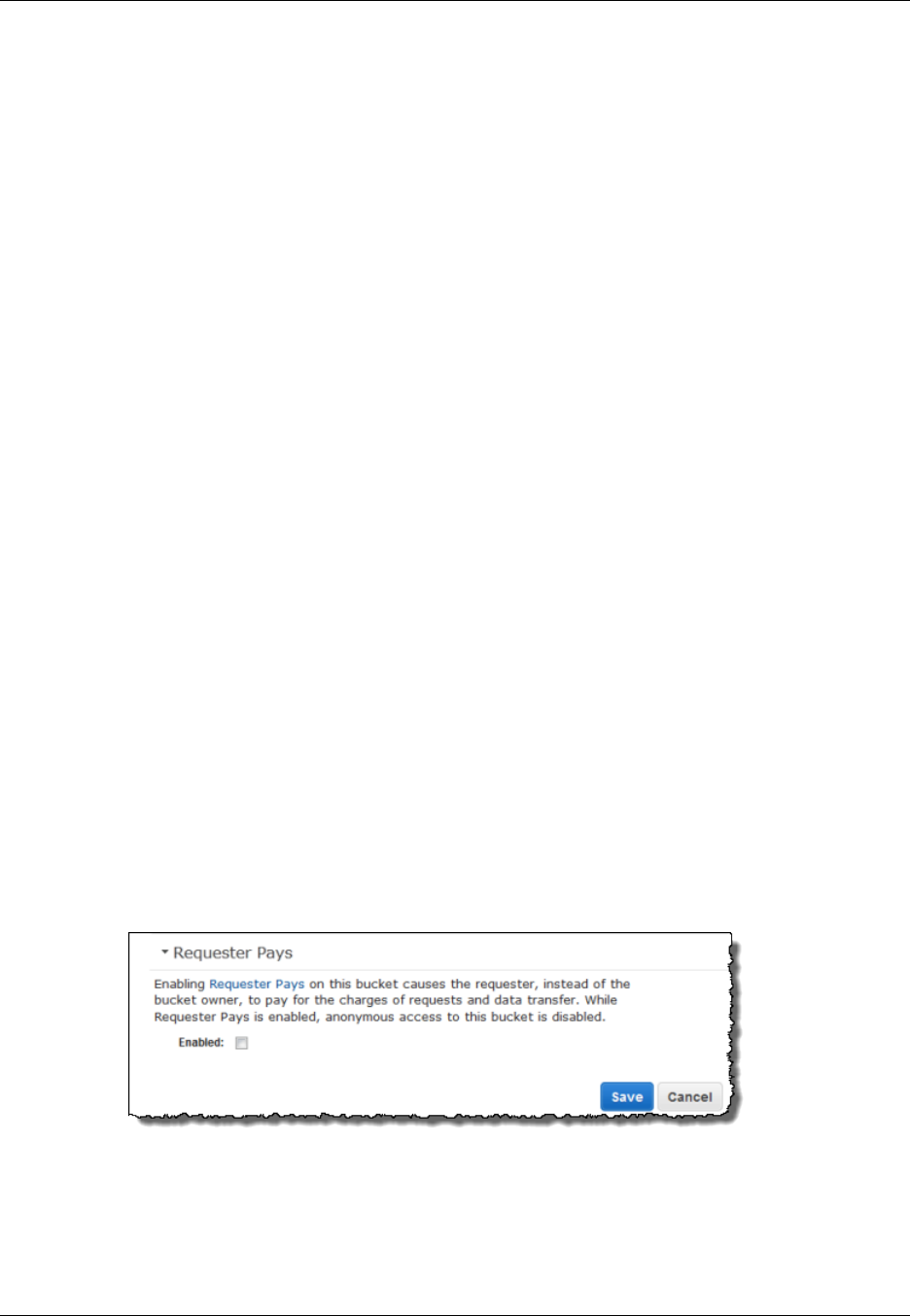

Requester Pays Buckets ...................................................................................................... 92

Configure with the Console ........................................................................................... 93

Configure with the REST API ........................................................................................ 93

DevPay and Requester Pays ........................................................................................ 96

Charge Details ............................................................................................................ 96

Access Control ................................................................................................................... 96

Billing and Reporting ........................................................................................................... 96

Cost Allocation Tagging ............................................................................................... 96

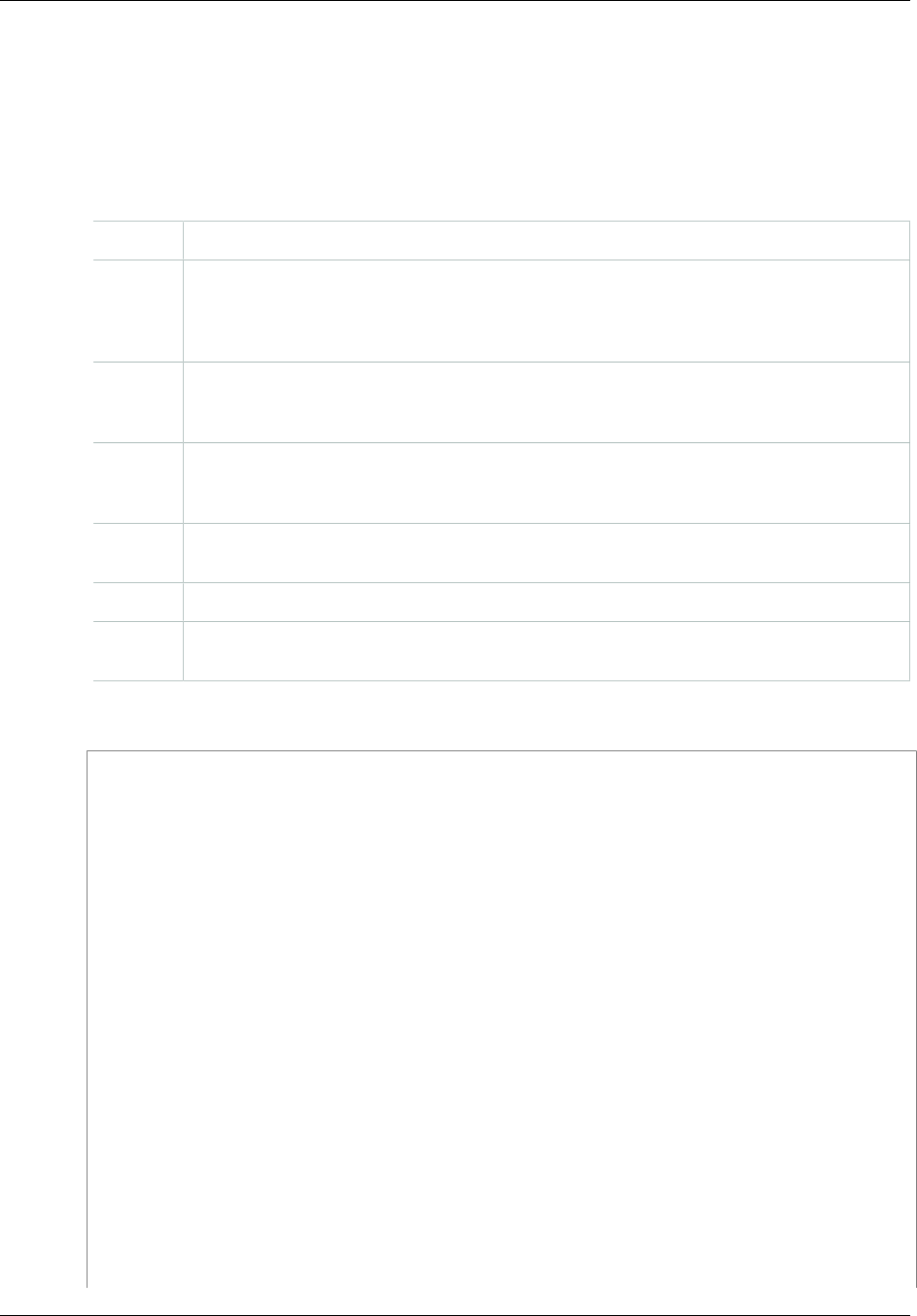

Objects ...................................................................................................................................... 98

Object Key and Metadata ..................................................................................................... 99

Object Keys ............................................................................................................... 99

Object Metadata ........................................................................................................ 101

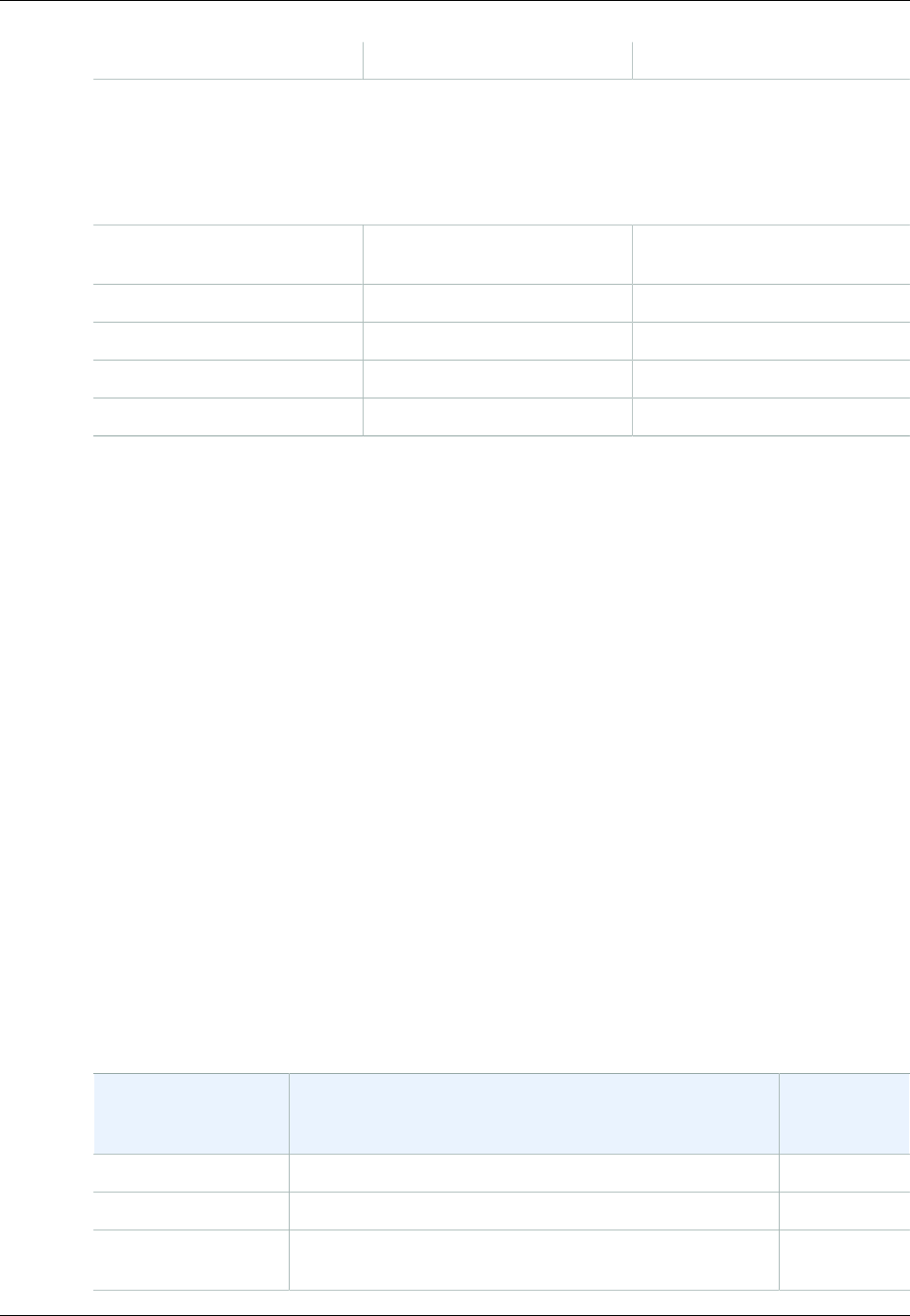

Storage Classes ................................................................................................................ 103

Subresources .................................................................................................................... 105

Versioning ........................................................................................................................ 106

Lifecycle Management ........................................................................................................ 109

What Is Lifecycle Configuration? .................................................................................. 109

How Do I Configure a Lifecycle? .................................................................................. 110

Transitioning Objects: General Considerations ............................................................... 110

Expiring Objects: General Considerations ...................................................................... 112

Lifecycle and Other Bucket Configurations ..................................................................... 112

Lifecycle Configuration Elements ................................................................................. 113

GLACIER Storage Class: Additional Considerations ........................................................ 124

Specifying a Lifecycle Configuration ............................................................................. 125

Cross-Origin Resource Sharing (CORS) ................................................................................ 131

Cross-Origin Resource Sharing: Use-case Scenarios ...................................................... 131

How Do I Configure CORS on My Bucket? .................................................................... 132

How Does Amazon S3 Evaluate the CORS Configuration On a Bucket? ............................. 134

Enabling CORS ......................................................................................................... 134

Troubleshooting CORS ............................................................................................... 142

Operations on Objects ........................................................................................................ 142

Getting Objects ......................................................................................................... 143

Uploading Objects ..................................................................................................... 157

Copying Objects ........................................................................................................ 212

Listing Object Keys .................................................................................................... 229

Deleting Objects ........................................................................................................ 237

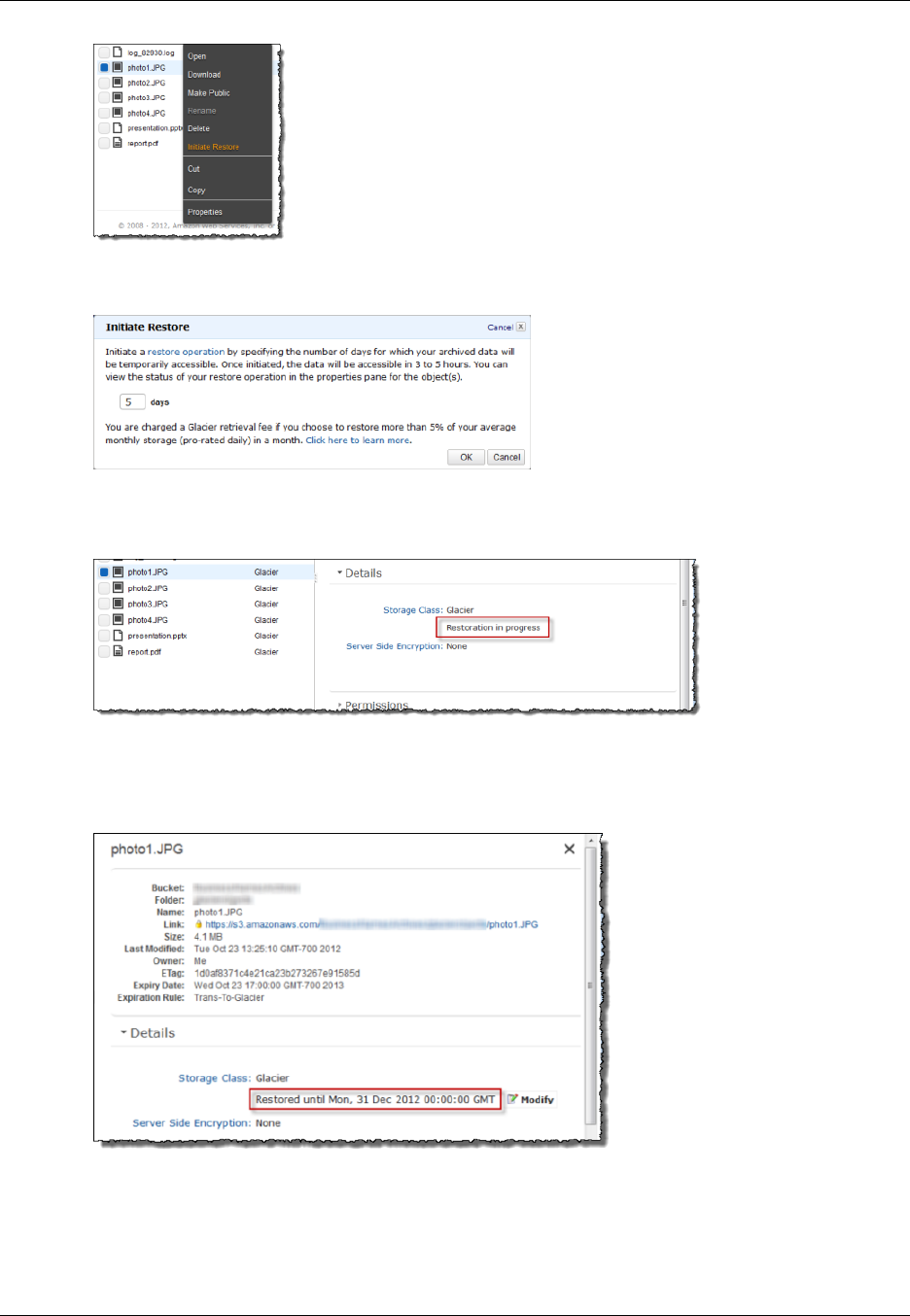

Restoring Archived Objects ......................................................................................... 259

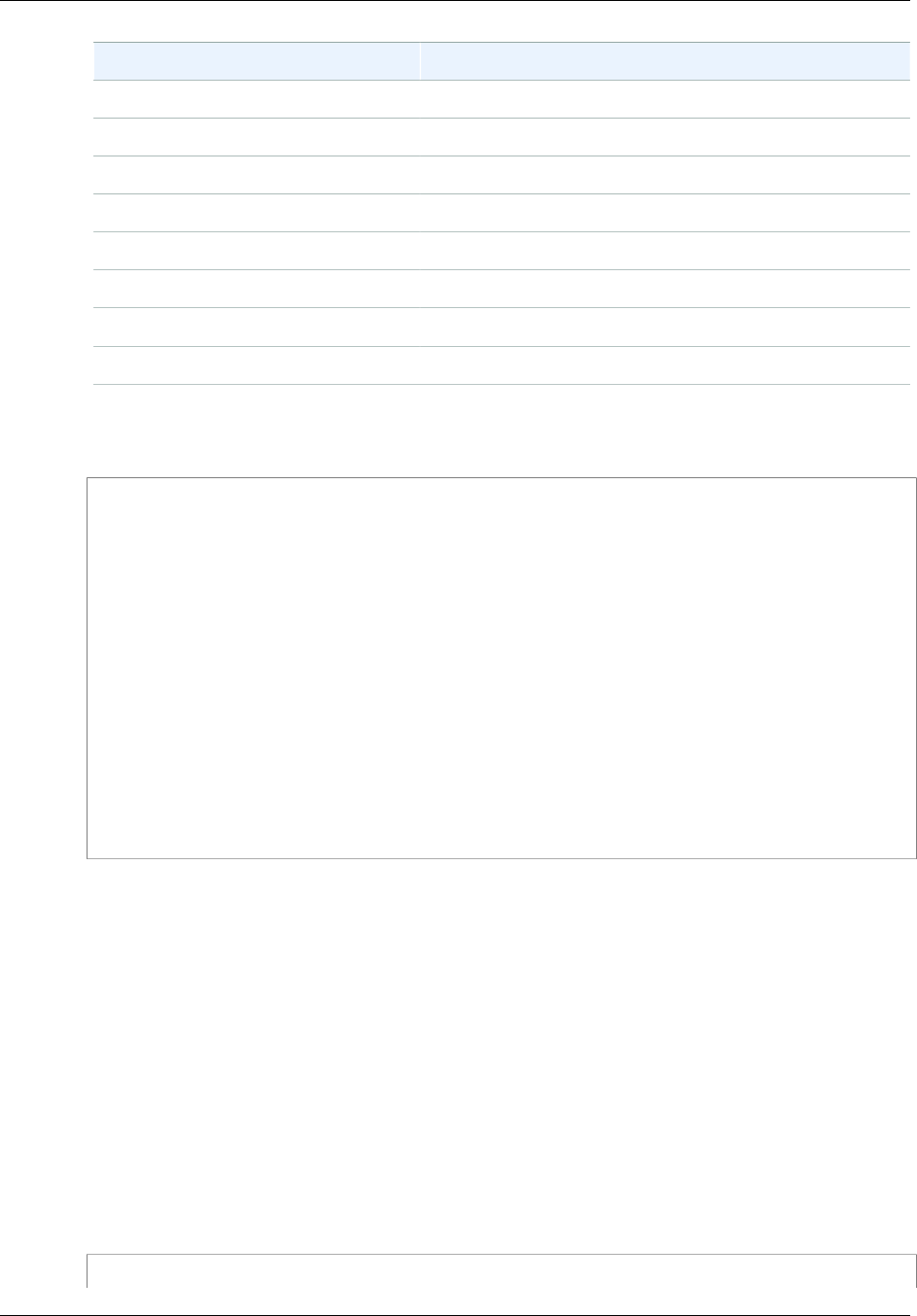

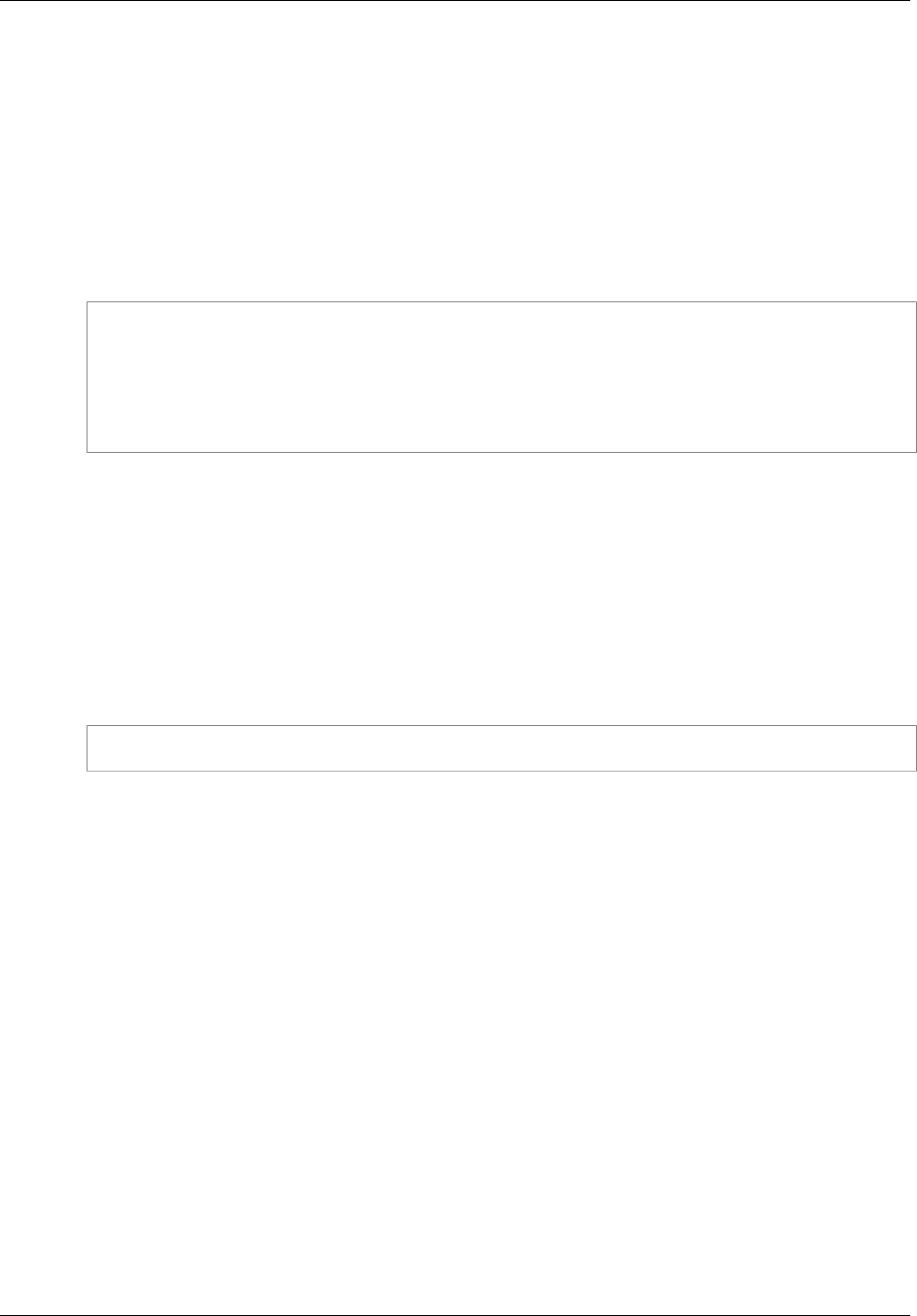

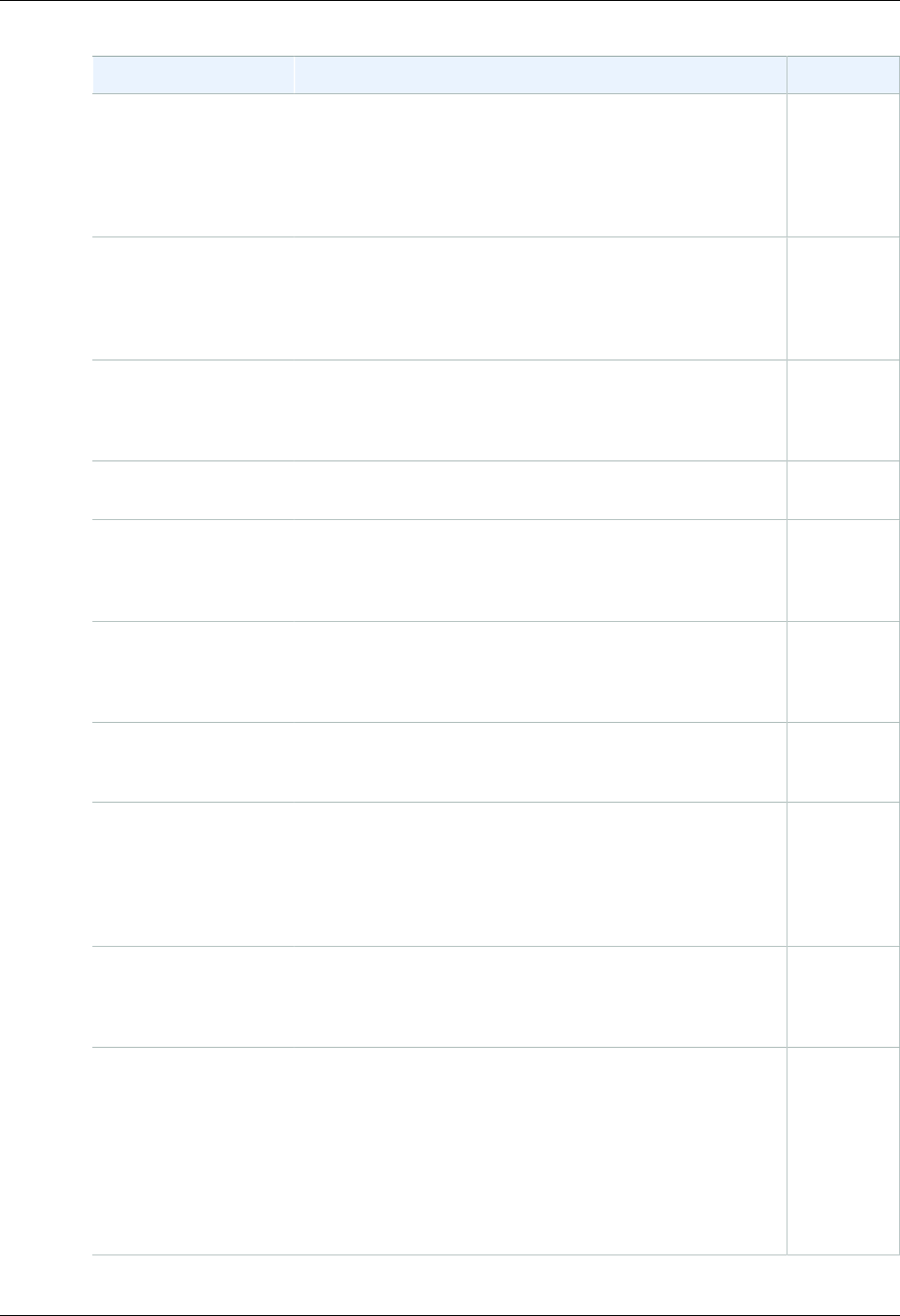

Managing Access ...................................................................................................................... 266

Introduction ....................................................................................................................... 266

Overview .................................................................................................................. 267

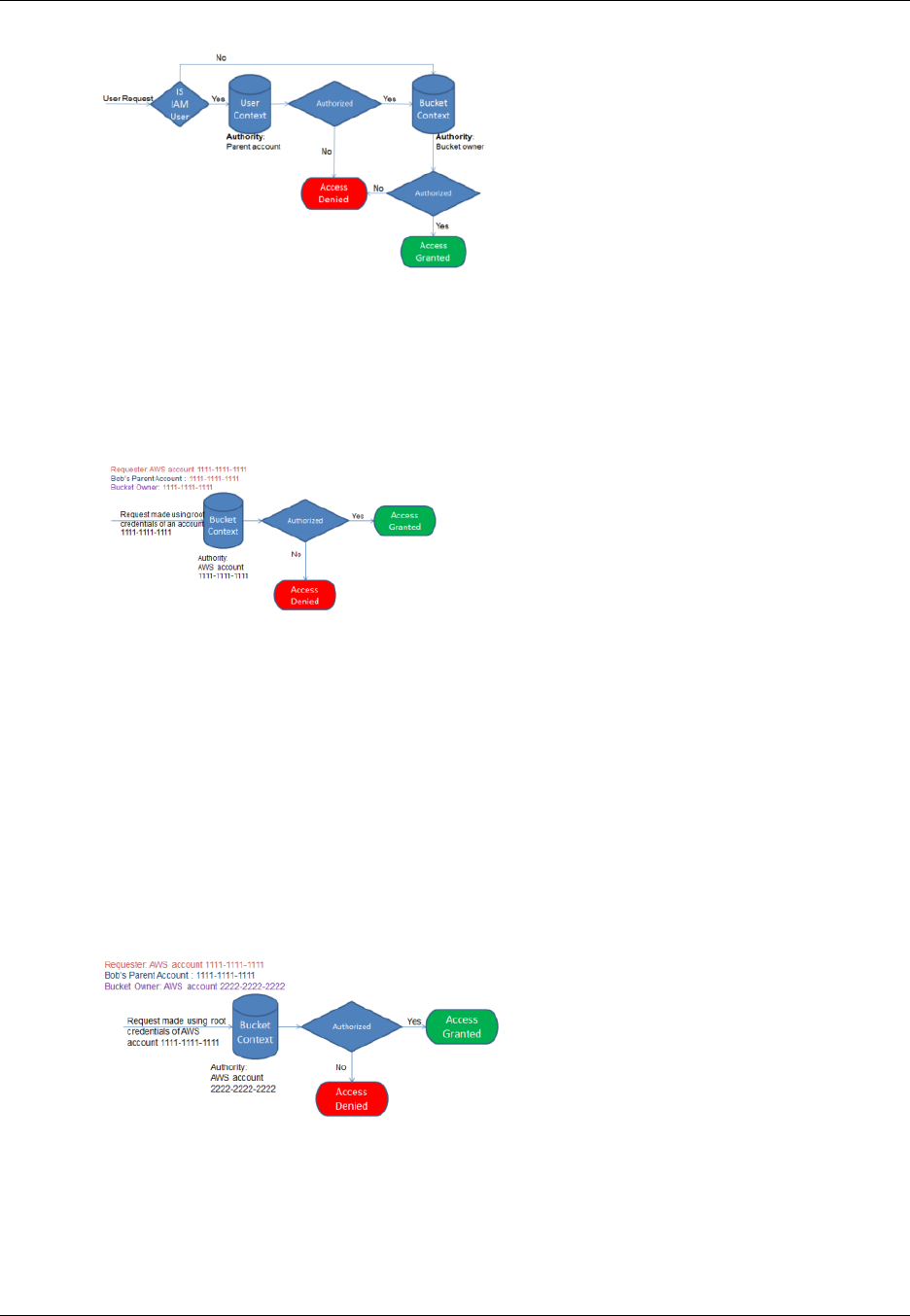

How Amazon S3 Authorizes a Request ........................................................................ 272

Guidelines for Using the Available Access Policy Options ................................................ 277

Example Walkthroughs: Managing Access ..................................................................... 280

Using Bucket Policies and User Policies ............................................................................... 308

API Version 2006-03-01

v

Amazon Simple Storage Service Developer Guide

Access Policy Language Overview ............................................................................... 308

Bucket Policy Examples ............................................................................................. 334

User Policy Examples ................................................................................................ 343

Managing Access with ACLs ............................................................................................... 364

Access Control List (ACL) Overview ............................................................................. 364

Managing ACLs ......................................................................................................... 369

Protecting Data ......................................................................................................................... 380

Data Encryption ................................................................................................................. 380

Server-Side Encryption ............................................................................................... 381

Client-Side Encryption ................................................................................................ 409

Reduced Redundancy Storage ............................................................................................ 420

Setting the Storage Class of an Object You Upload ........................................................ 421

Changing the Storage Class of an Object in Amazon S3 .................................................. 421

Versioning ........................................................................................................................ 423

How to Configure Versioning on a Bucket ..................................................................... 424

MFA Delete .............................................................................................................. 425

Related Topics .......................................................................................................... 425

Examples ................................................................................................................. 426

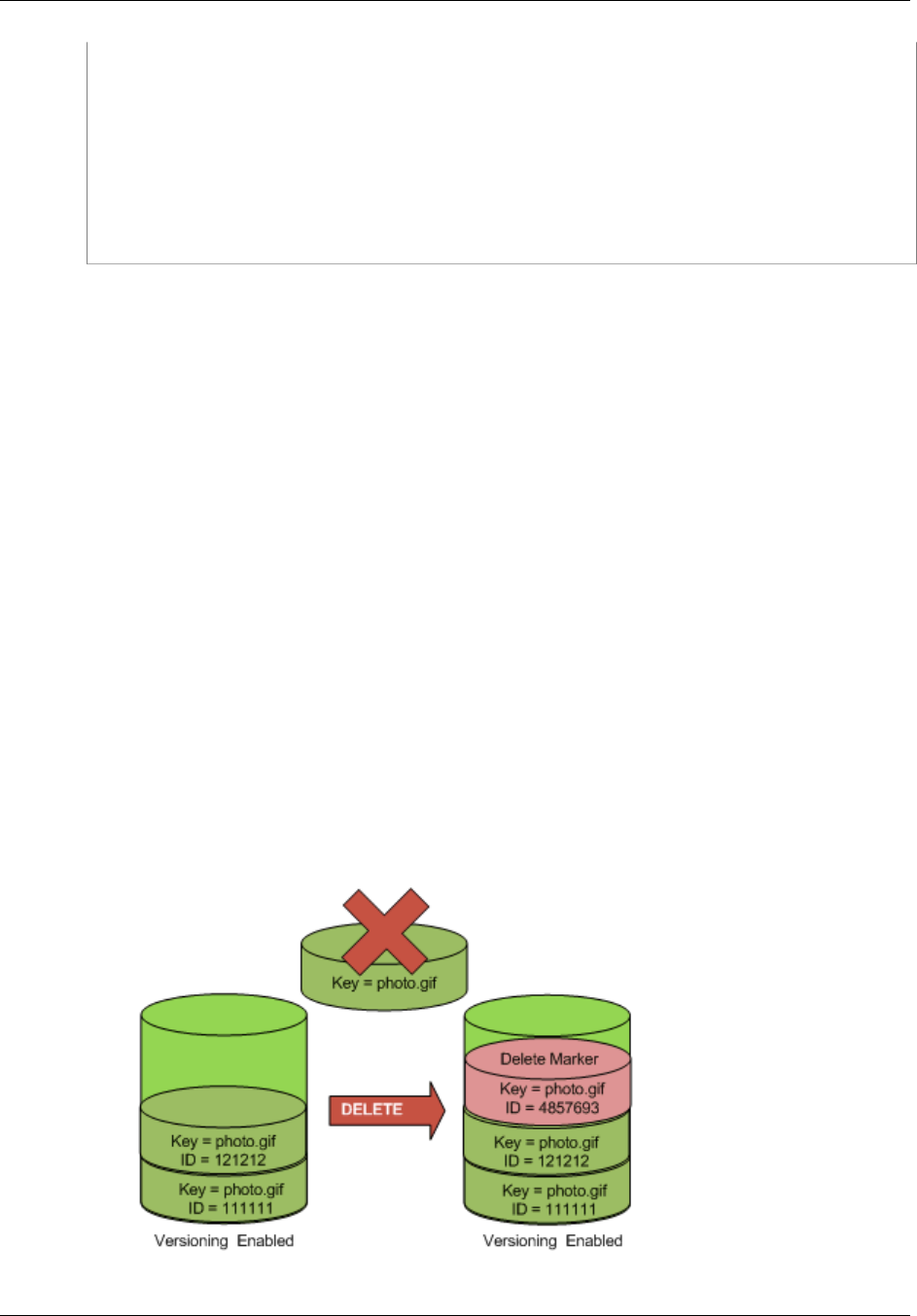

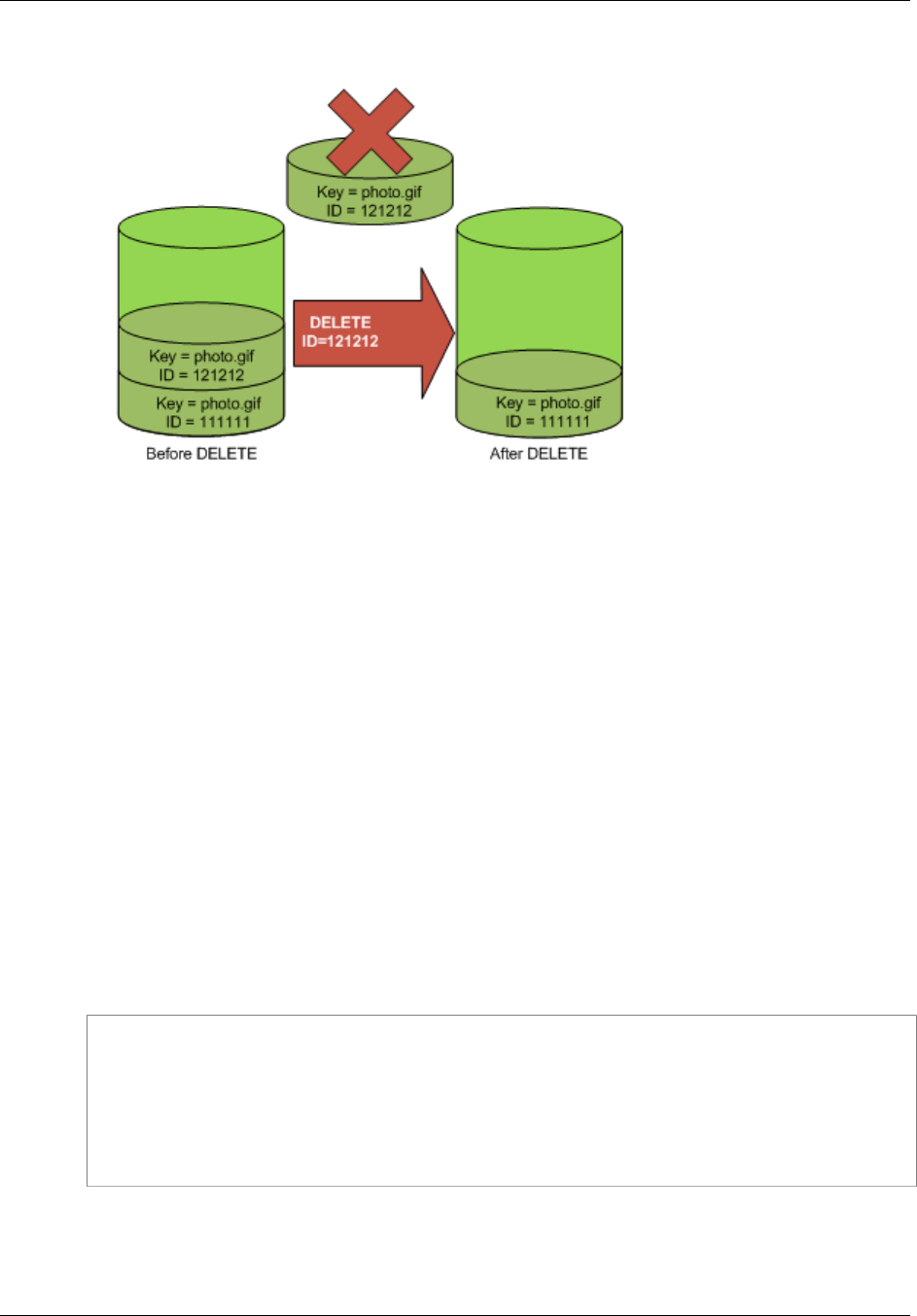

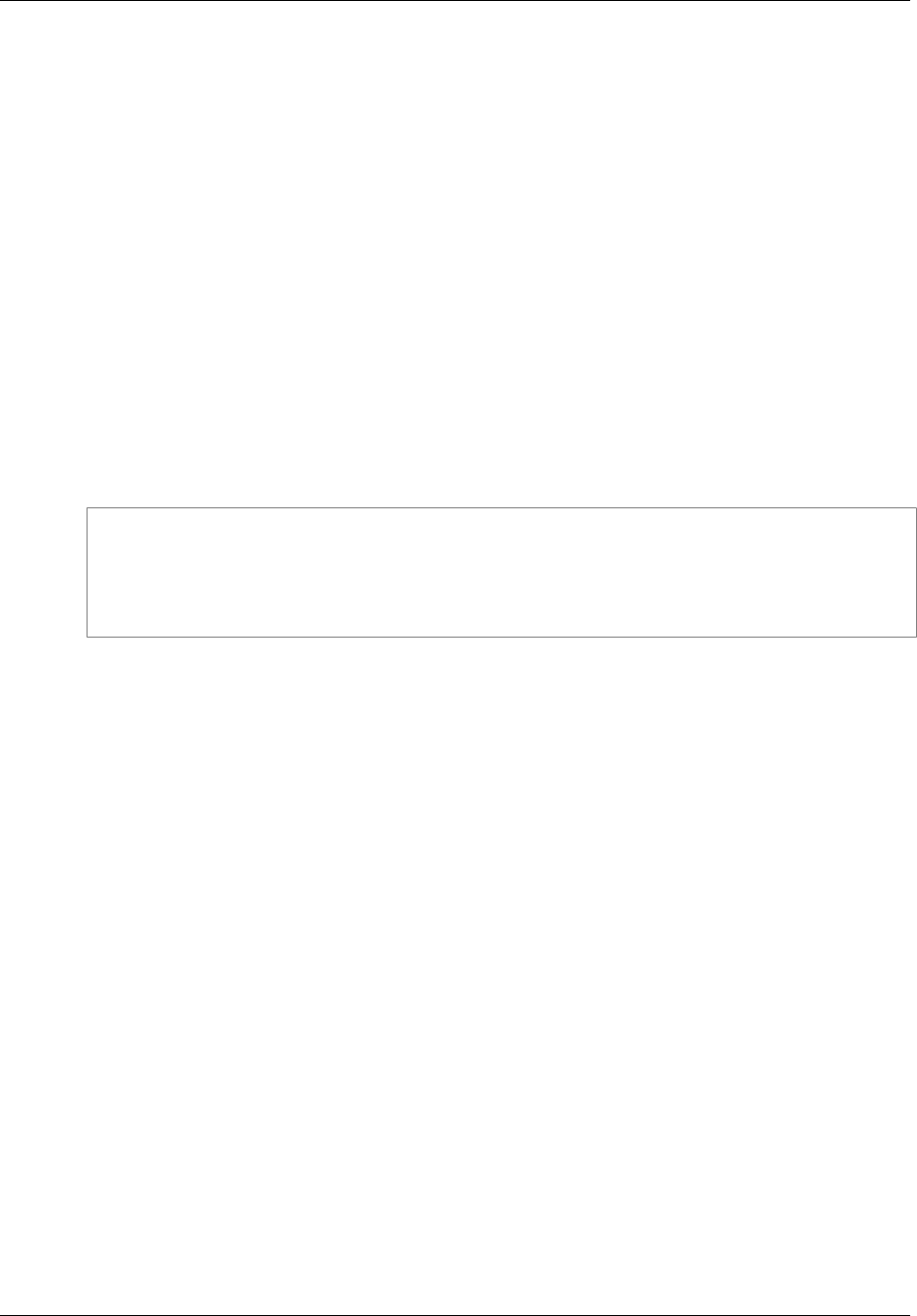

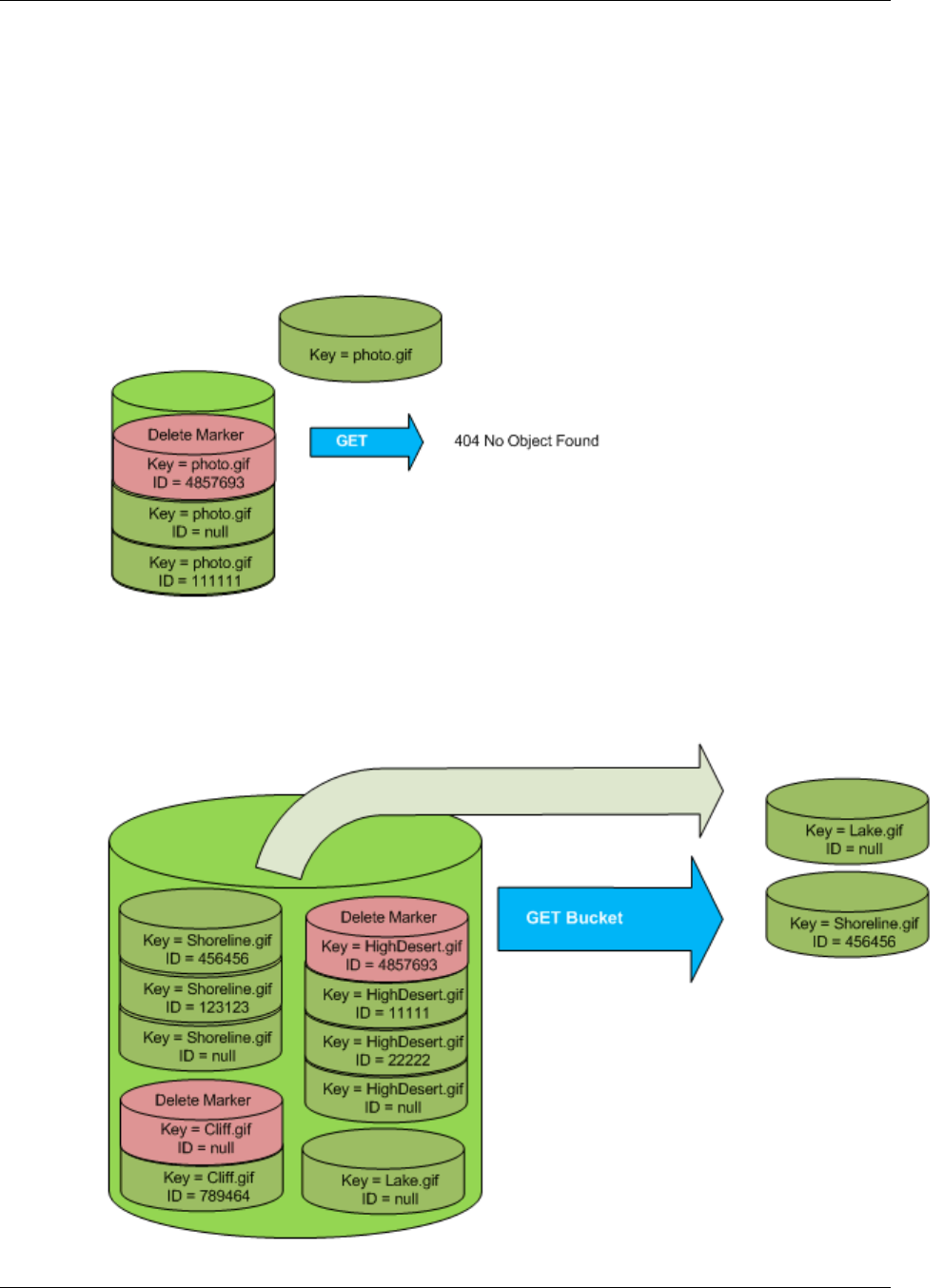

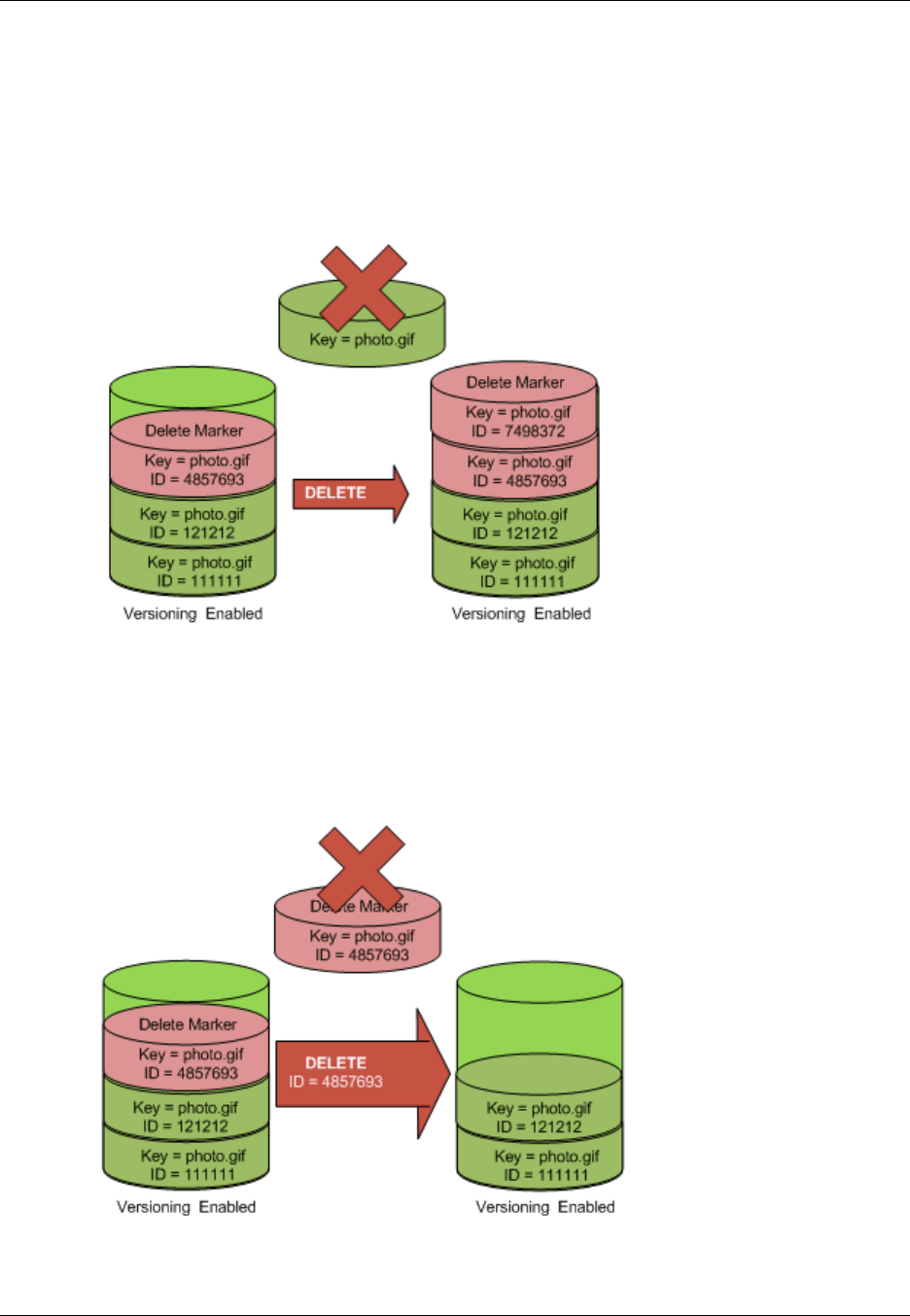

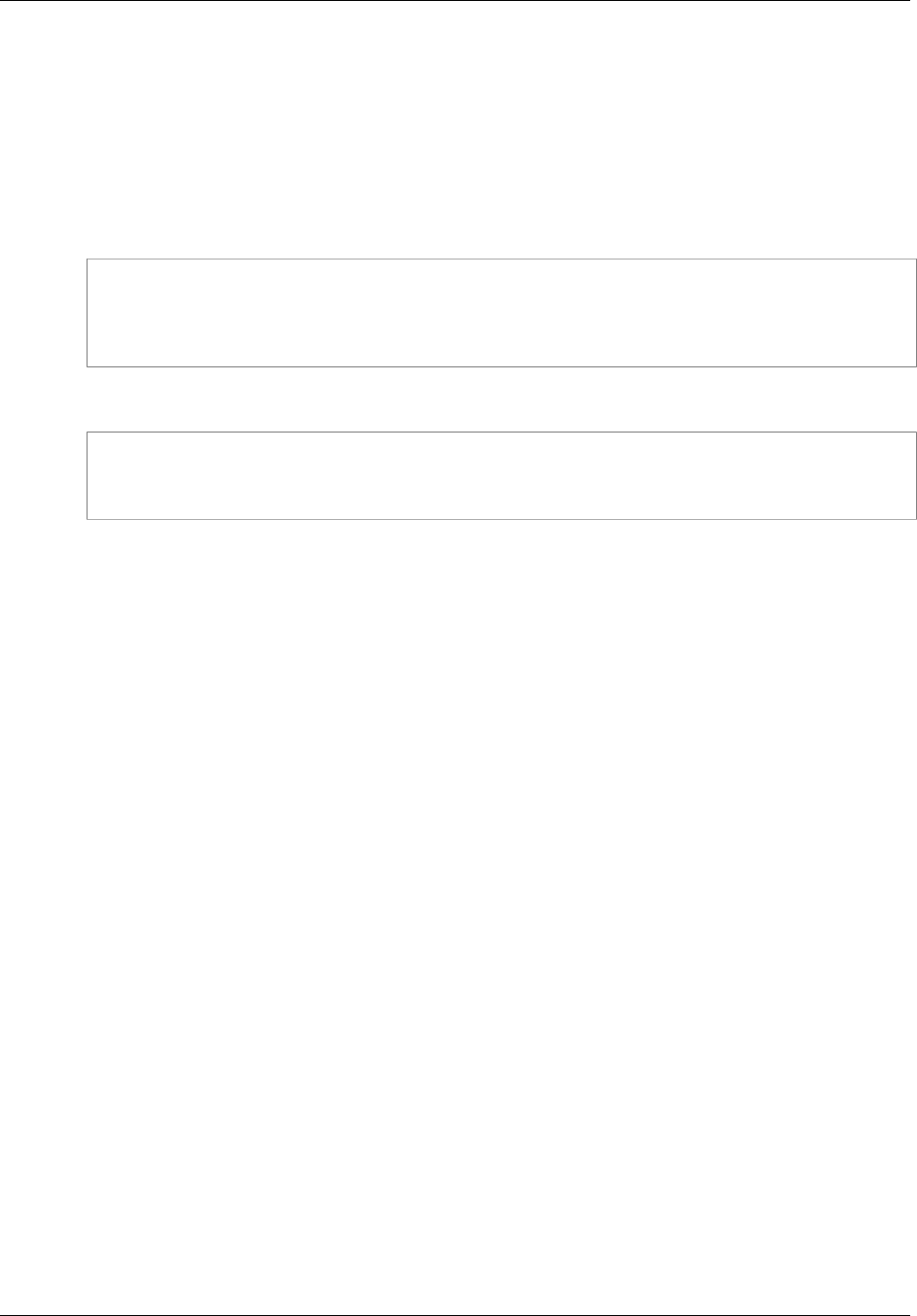

Managing Objects in a Versioning-Enabled Bucket ......................................................... 428

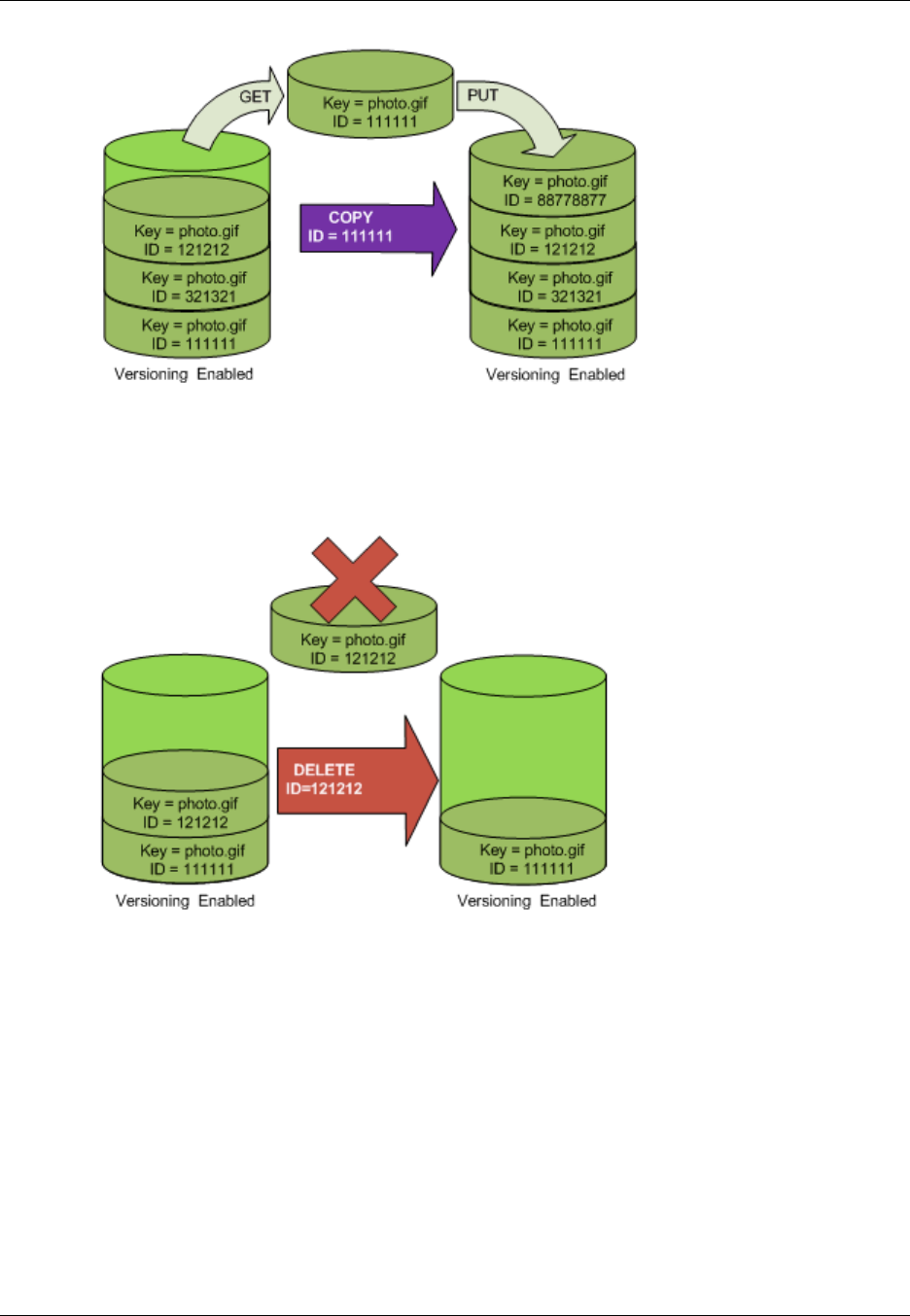

Managing Objects in a Versioning-Suspended Bucket ..................................................... 444

Hosting a Static Website ............................................................................................................ 449

Website Endpoints ............................................................................................................. 450

Key Differences Between the Amazon Website and the REST API Endpoint ........................ 451

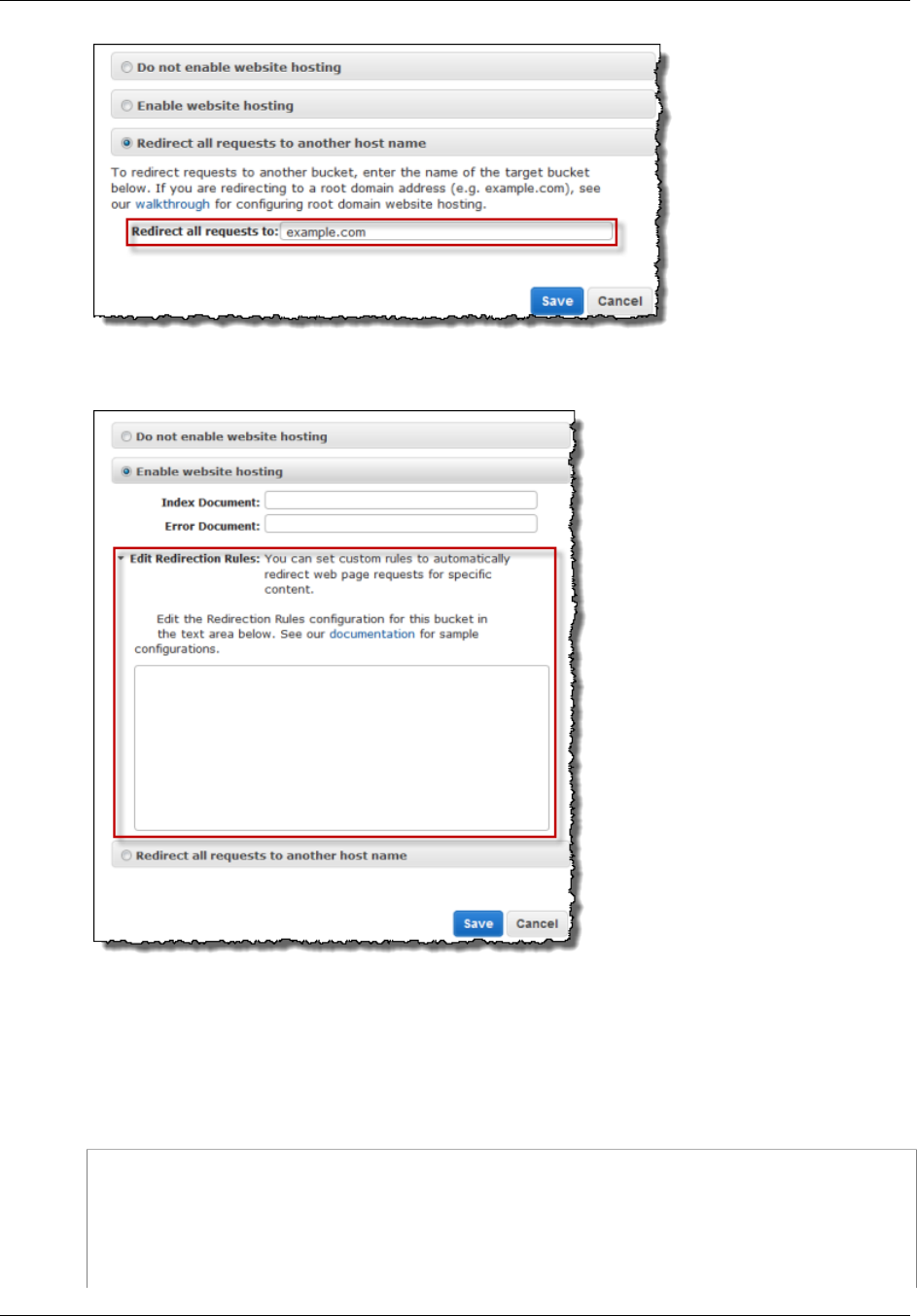

Configure a Bucket for Website Hosting ................................................................................ 452

Overview .................................................................................................................. 452

Syntax for Specifying Routing Rules ............................................................................. 454

Index Document Support ............................................................................................ 457

Custom Error Document Support ................................................................................. 459

Configuring a Redirect ................................................................................................ 460

Permissions Required for Website Access ..................................................................... 462

Example Walkthroughs ....................................................................................................... 462

Example: Setting Up a Static Website .......................................................................... 463

Example: Setting Up a Static Website Using a Custom Domain ........................................ 464

Notifications .............................................................................................................................. 472

Overview .......................................................................................................................... 472

How to Enable Event Notifications ....................................................................................... 473

Event Notification Types and Destinations ............................................................................. 475

Supported Event Types .............................................................................................. 475

Supported Destinations .............................................................................................. 476

Configuring Notifications with Object Key Name Filtering ......................................................... 476

Examples of Valid Notification Configurations with Object Key Name Filtering ...................... 477

Examples of Notification Configurations with Invalid Prefix/Suffix Overlapping ...................... 479

Granting Permissions to Publish Event Notification Messages to a Destination ............................. 481

Granting Permissions to Invoke an AWS Lambda Function .............................................. 481

Granting Permissions to Publish Messages to an SNS Topic or an SQS Queue ................... 481

Example Walkthrough 1 ..................................................................................................... 483

Walkthrough Summary ............................................................................................... 483

Step 1: Create an Amazon SNS Topic .......................................................................... 484

Step 2: Create an Amazon SQS Queue ........................................................................ 484

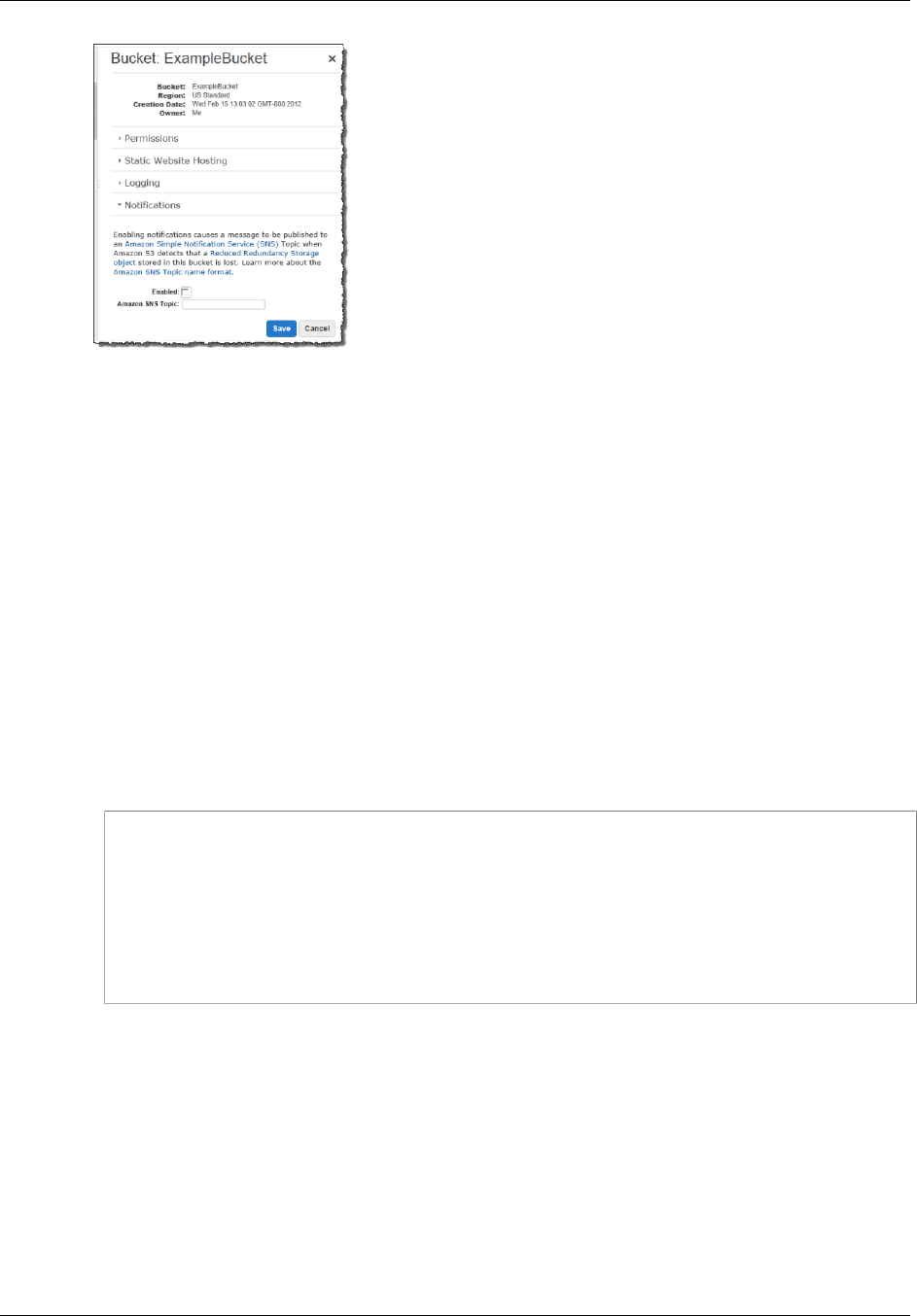

Step 3: Add a Notification Configuration to Your Bucket ................................................... 485

Step 4: Test the Setup ............................................................................................... 489

Example Walkthrough 2 ..................................................................................................... 489

Event Message Structure .................................................................................................... 489

Cross-Region Replication ............................................................................................................ 492

Use-case Scenarios ........................................................................................................... 492

Requirements .................................................................................................................... 493

Related Topics .................................................................................................................. 493

What Is and Is Not Replicated ............................................................................................. 493

API Version 2006-03-01

vi

Amazon Simple Storage Service Developer Guide

What Is Replicated .................................................................................................... 493

What Is Not Replicated .............................................................................................. 494

Related Topics .......................................................................................................... 495

How to Set Up .................................................................................................................. 495

Create an IAM Role ................................................................................................... 495

Add Replication Configuration ...................................................................................... 497

Walkthrough 1: Same AWS Account ............................................................................ 500

Walkthrough 2: Different AWS Accounts ....................................................................... 501

Using the Console ..................................................................................................... 505

Using the AWS SDK for Java ...................................................................................... 505

Using the AWS SDK for .NET ..................................................................................... 507

Replication Status Information ............................................................................................. 509

Related Topics .......................................................................................................... 510

Troubleshooting ................................................................................................................. 511

Related Topics .......................................................................................................... 511

Replication and Other Bucket Configurations ......................................................................... 511

Lifecycle Configuration and Object Replicas .................................................................. 512

Versioning Configuration and Replication Configuration ................................................... 512

Logging Configuration and Replication Configuration ....................................................... 512

Related Topics .......................................................................................................... 512

Request Routing ....................................................................................................................... 513

Request Redirection and the REST API ................................................................................ 513

Overview .................................................................................................................. 513

DNS Routing ............................................................................................................ 514

Temporary Request Redirection ................................................................................... 514

Permanent Request Redirection .................................................................................. 516

DNS Considerations ........................................................................................................... 516

Performance Optimization ........................................................................................................... 518

Request Rate and Performance Considerations ..................................................................... 518

Workloads with a Mix of Request Types ....................................................................... 519

GET-Intensive Workloads ........................................................................................... 521

TCP Window Scaling ......................................................................................................... 521

TCP Selective Acknowledgement ......................................................................................... 522

Monitoring with Amazon CloudWatch ............................................................................................ 523

Amazon S3 CloudWatch Metrics .......................................................................................... 523

Amazon S3 CloudWatch Dimensions .................................................................................... 524

Accessing Metrics in Amazon CloudWatch ............................................................................ 524

Related Resources ............................................................................................................ 525

Logging API Calls with AWS CloudTrail ........................................................................................ 526

Amazon S3 Information in CloudTrail .................................................................................... 526

Using CloudTrail Logs with Amazon S3 Server Access Logs and CloudWatch Logs ...................... 528

Understanding Amazon S3 Log File Entries ........................................................................... 528

Related Resources ............................................................................................................ 530

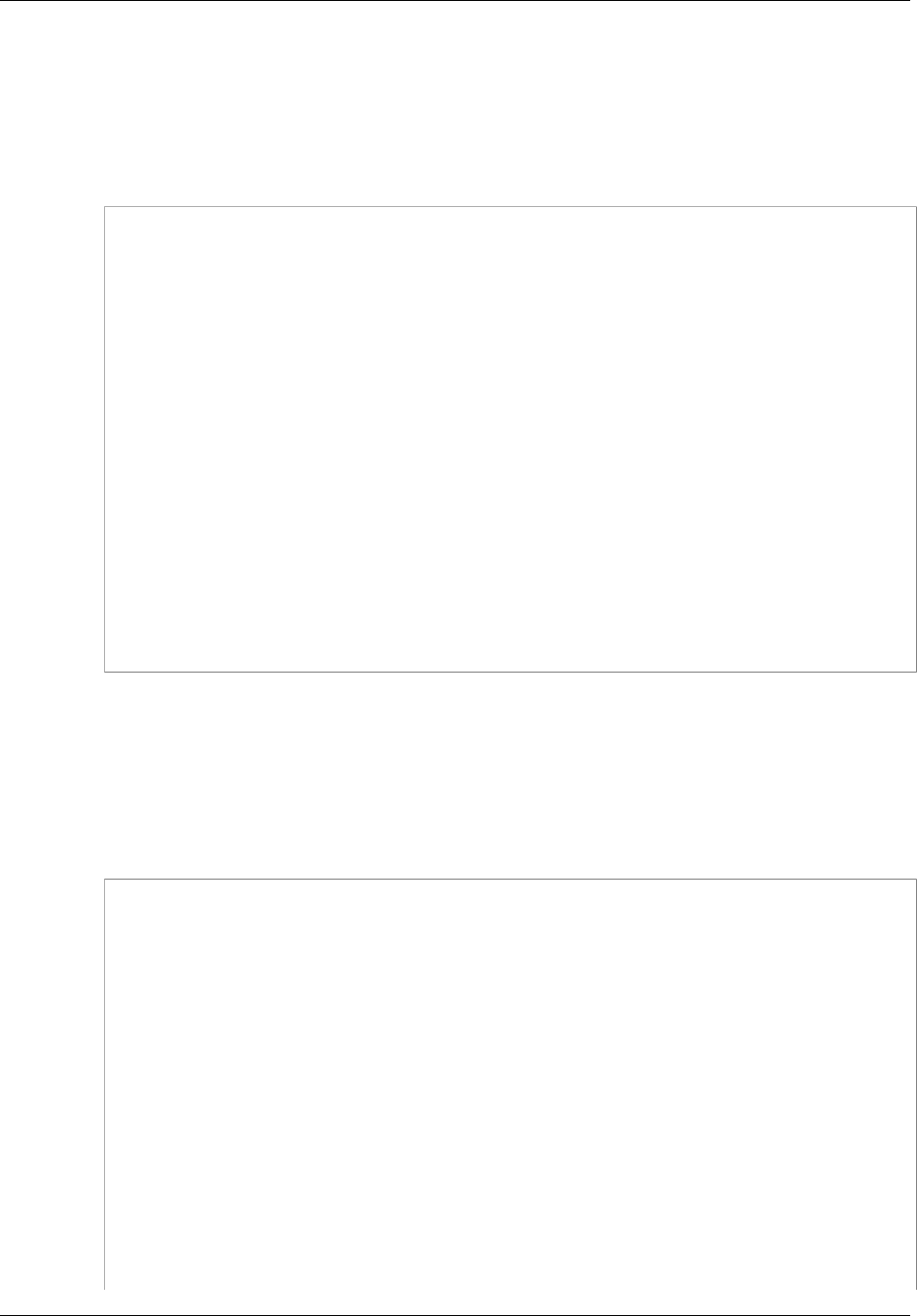

BitTorrent ................................................................................................................................. 531

How You are Charged for BitTorrent Delivery ........................................................................ 531

Using BitTorrent to Retrieve Objects Stored in Amazon S3 ...................................................... 532

Publishing Content Using Amazon S3 and BitTorrent .............................................................. 533

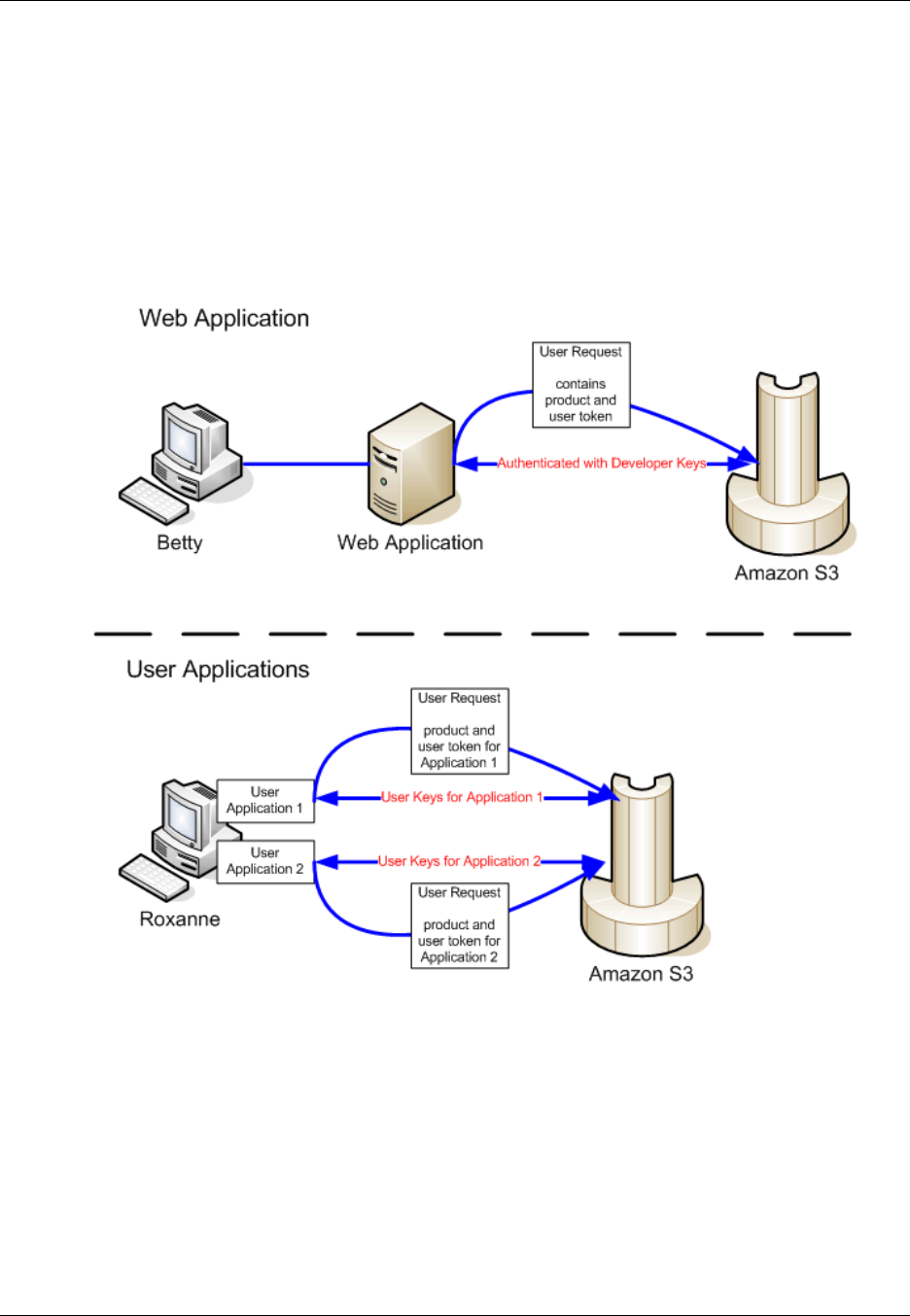

Amazon DevPay ....................................................................................................................... 534

Amazon S3 Customer Data Isolation .................................................................................... 534

Example ................................................................................................................... 535

Amazon DevPay Token Mechanism ..................................................................................... 535

Amazon S3 and Amazon DevPay Authentication .................................................................... 535

Amazon S3 Bucket Limitation .............................................................................................. 536

Amazon S3 and Amazon DevPay Process ............................................................................ 537

Additional Information ......................................................................................................... 537

Error Handling ........................................................................................................................... 538

The REST Error Response ................................................................................................. 538

Response Headers .................................................................................................... 539

API Version 2006-03-01

vii

Amazon Simple Storage Service Developer Guide

Error Response ......................................................................................................... 539

The SOAP Error Response ................................................................................................. 540

Amazon S3 Error Best Practices .......................................................................................... 540

Retry InternalErrors .................................................................................................... 540

Tune Application for Repeated SlowDown errors ............................................................ 540

Isolate Errors ............................................................................................................ 541

Troubleshooting Amazon S3 ....................................................................................................... 542

General: Getting my Amazon S3 request IDs ......................................................................... 542

Using HTTP .............................................................................................................. 542

Using a Web Browser ................................................................................................ 543

Using an AWS SDK ................................................................................................... 543

Using the AWS CLI ................................................................................................... 544

Using Windows PowerShell ......................................................................................... 544

Related Topics .................................................................................................................. 544

Server Access Logging .............................................................................................................. 546

Overview .......................................................................................................................... 546

Log Object Key Format .............................................................................................. 547

How are Logs Delivered? ........................................................................................... 547

Best Effort Server Log Delivery ................................................................................... 547

Bucket Logging Status Changes Take Effect Over Time .................................................. 548

Related Topics .................................................................................................................. 548

Enabling Logging Using the Console .................................................................................... 548

Enabling Logging Programmatically ...................................................................................... 550

Enabling logging ........................................................................................................ 550

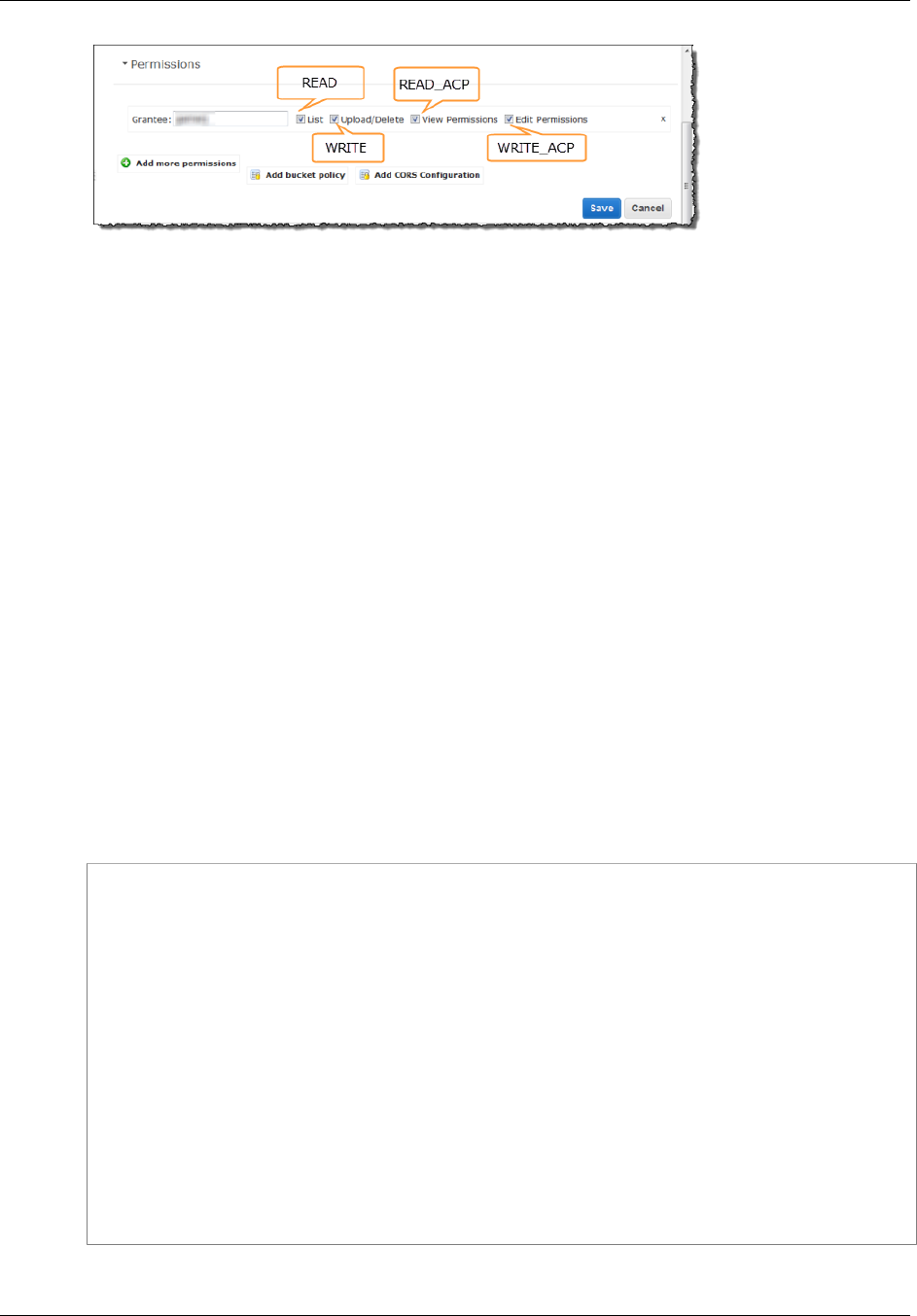

Granting the Log Delivery Group WRITE and READ_ACP Permissions .............................. 550

Example: AWS SDK for .NET ...................................................................................... 551

Log Format ....................................................................................................................... 553

Custom Access Log Information .................................................................................. 556

Programming Considerations for Extensible Server Access Log Format .............................. 556

Additional Logging for Copy Operations ........................................................................ 556

Deleting Log Files ............................................................................................................. 559

AWS SDKs and Explorers .......................................................................................................... 560

Specifying Signature Version in Request Authentication ........................................................... 561

Set Up the AWS CLI ......................................................................................................... 562

Using the AWS SDK for Java ............................................................................................. 563

The Java API Organization ......................................................................................... 564

Testing the Java Code Examples ................................................................................. 564

Using the AWS SDK for .NET ............................................................................................. 565

The .NET API Organization ......................................................................................... 565

Running the Amazon S3 .NET Code Examples .............................................................. 566

Using the AWS SDK for PHP and Running PHP Examples ...................................................... 566

AWS SDK for PHP Levels ......................................................................................... 566

Running PHP Examples ............................................................................................. 567

Related Resources .................................................................................................... 568

Using the AWS SDK for Ruby - Version 2 ............................................................................. 568

The Ruby API Organization ........................................................................................ 568

Testing the Ruby Script Examples ............................................................................... 568

Using the AWS SDK for Python (Boto) ................................................................................. 569

Appendices ............................................................................................................................... 570

Appendix A: Using the SOAP API ........................................................................................ 570

Common SOAP API Elements ..................................................................................... 570

Authenticating SOAP Requests .................................................................................... 571

Setting Access Policy with SOAP ................................................................................. 571

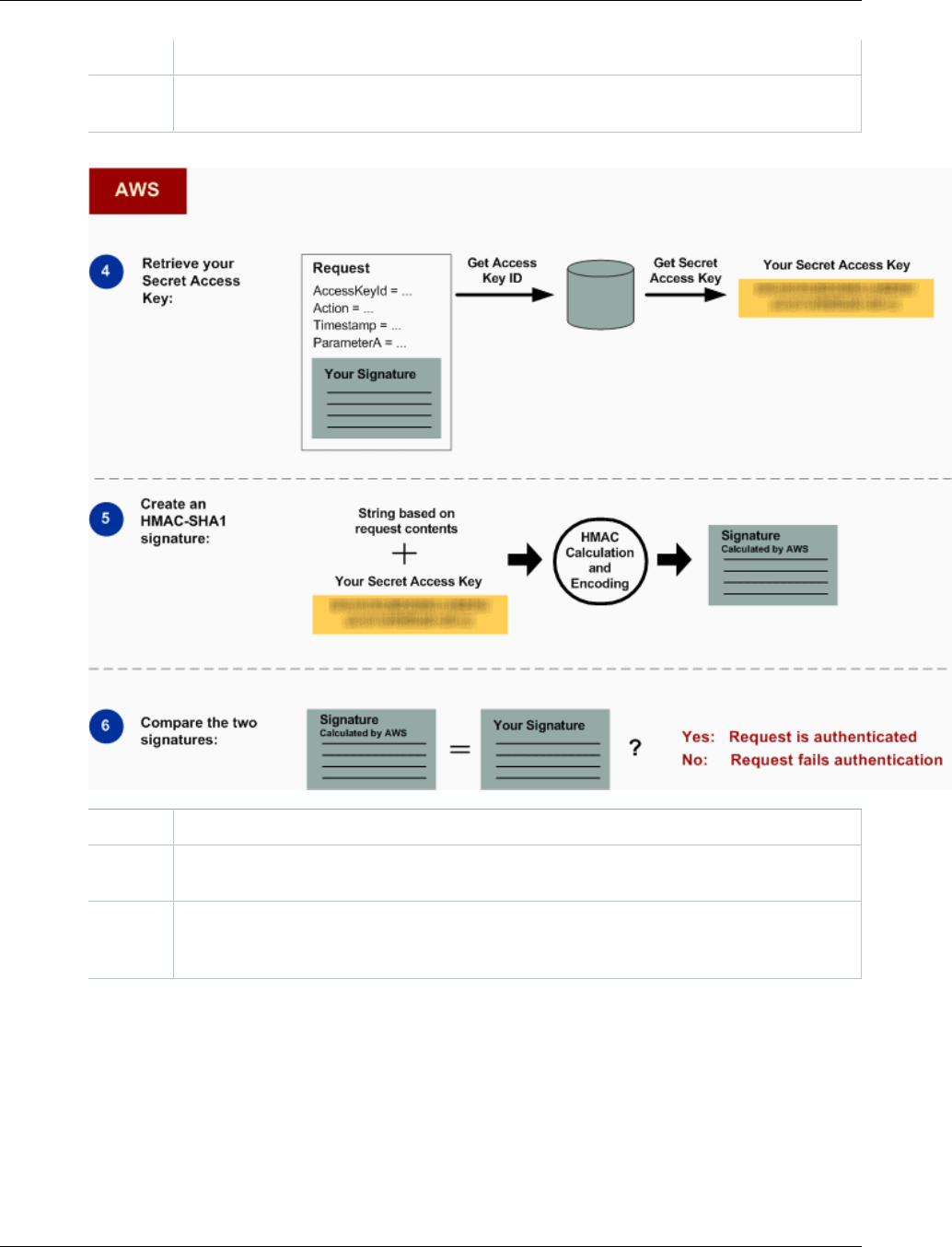

Appendix B: Authenticating Requests (AWS Signature Version 2) ............................................. 573

Authenticating Requests Using the REST API ................................................................ 574

Signing and Authenticating REST Requests .................................................................. 575

Browser-Based Uploads Using POST ........................................................................... 586

Resources ................................................................................................................................ 602

API Version 2006-03-01

viii

Amazon Simple Storage Service Developer Guide

Document History ...................................................................................................................... 604

AWS Glossary .......................................................................................................................... 614

API Version 2006-03-01

ix

Amazon Simple Storage Service Developer Guide

How Do I...?

What Is Amazon S3?

Amazon Simple Storage Service is storage for the Internet. It is designed to make web-scale

computing easier for developers.

Amazon S3 has a simple web services interface that you can use to store and retrieve any amount

of data, at any time, from anywhere on the web. It gives any developer access to the same highly

scalable, reliable, fast, inexpensive data storage infrastructure that Amazon uses to run its own global

network of web sites. The service aims to maximize benefits of scale and to pass those benefits on to

developers.

This guide explains the core concepts of Amazon S3, such as buckets and objects, and how to work

with these resources using the Amazon S3 application programming interface (API).

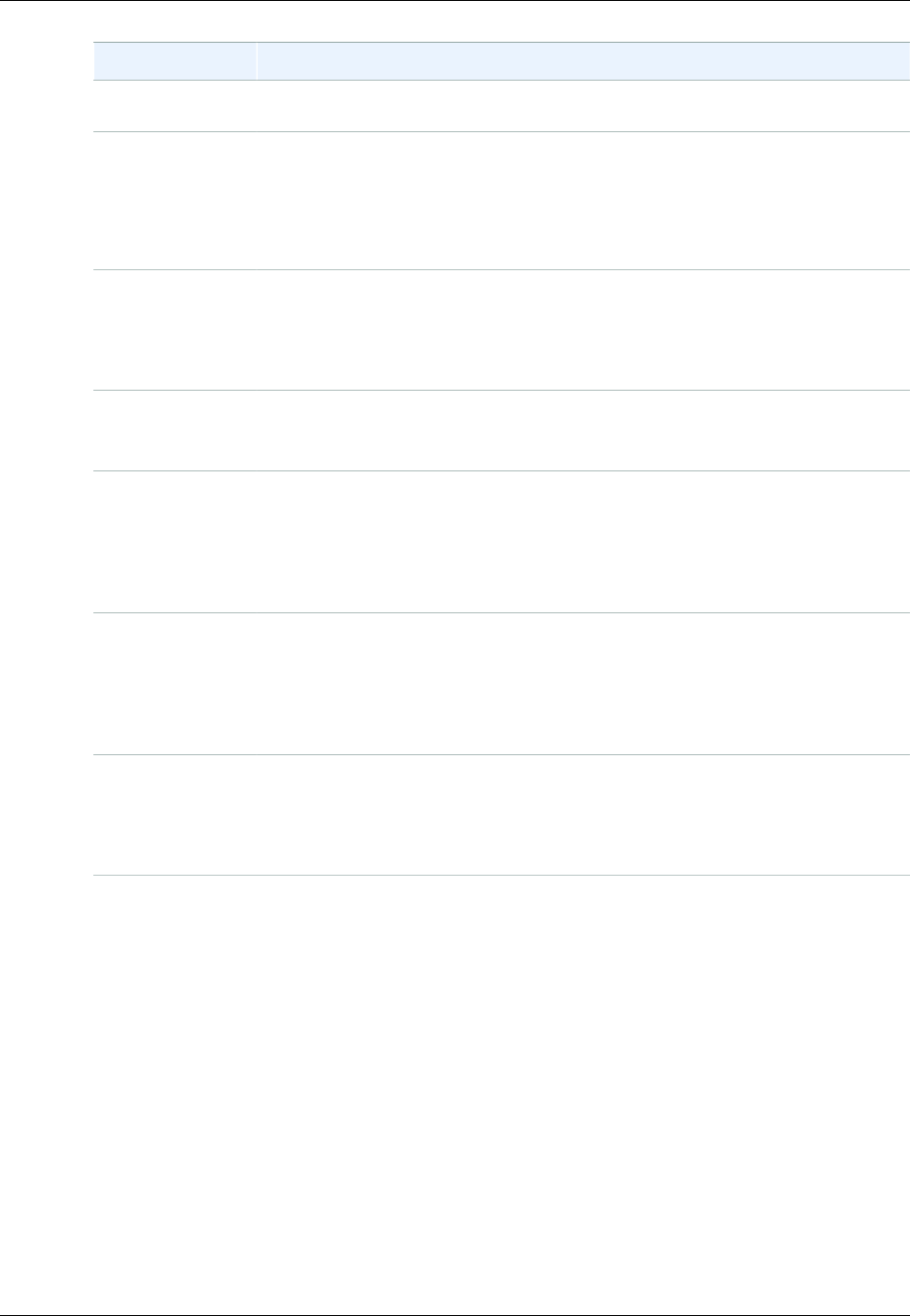

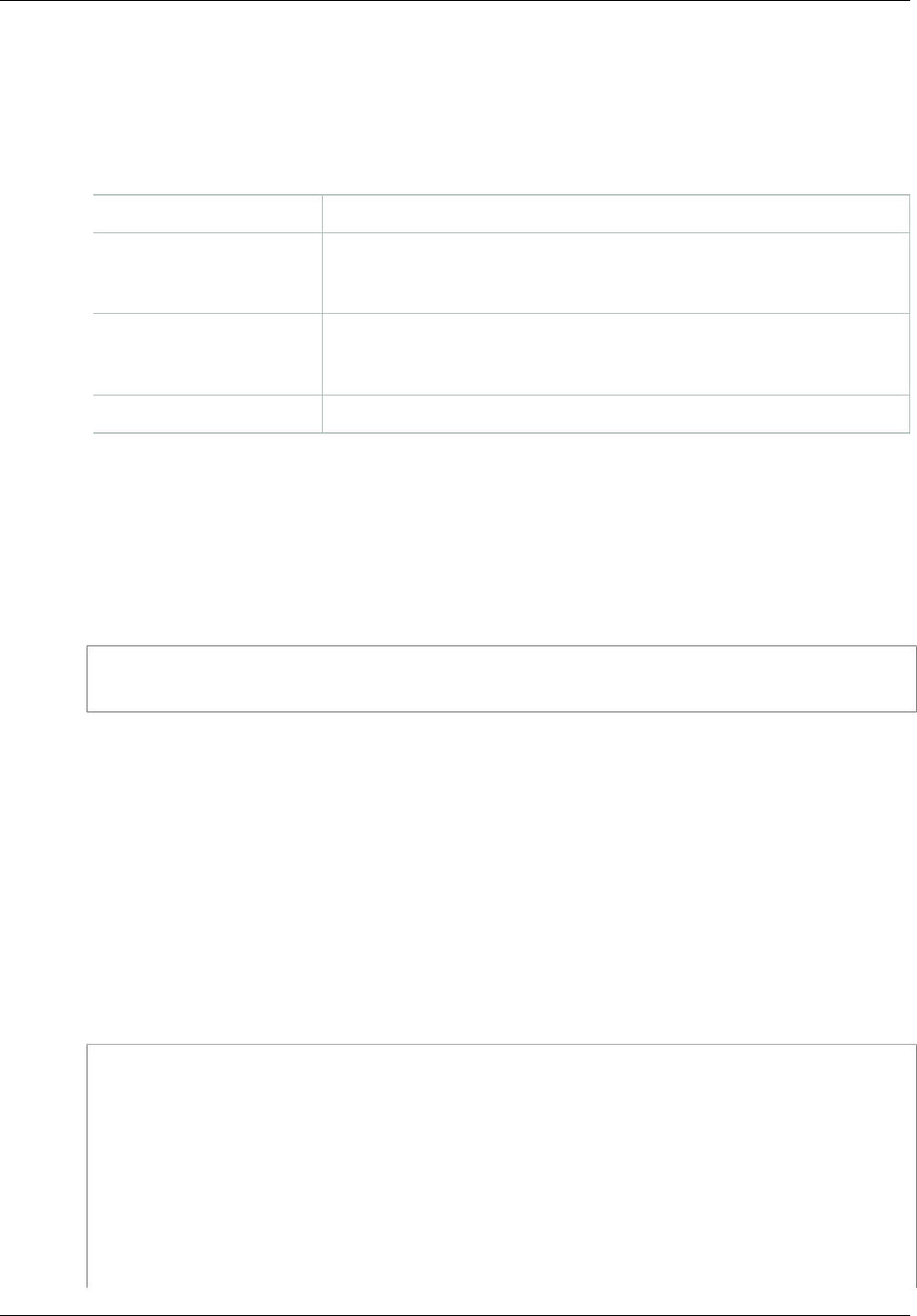

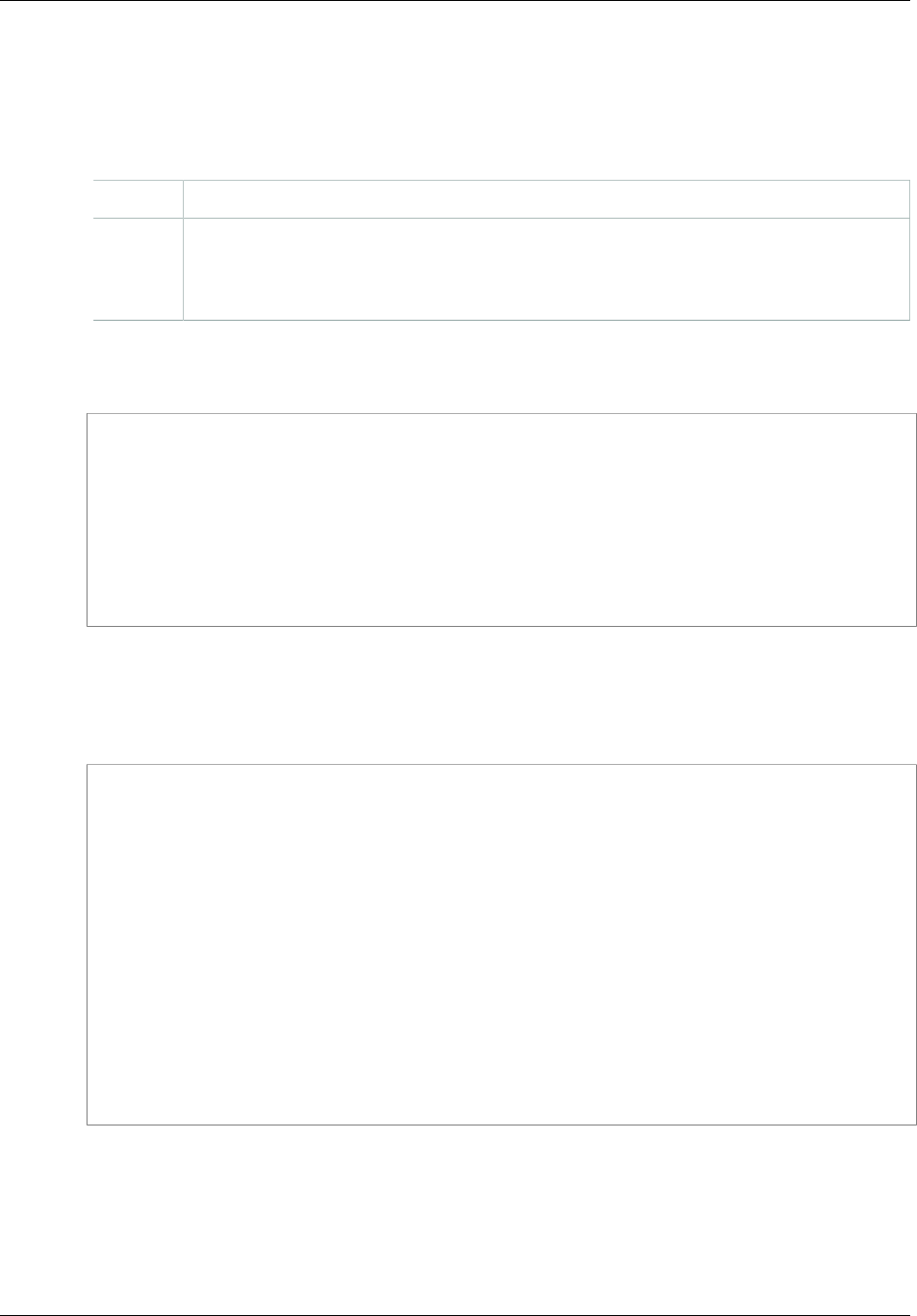

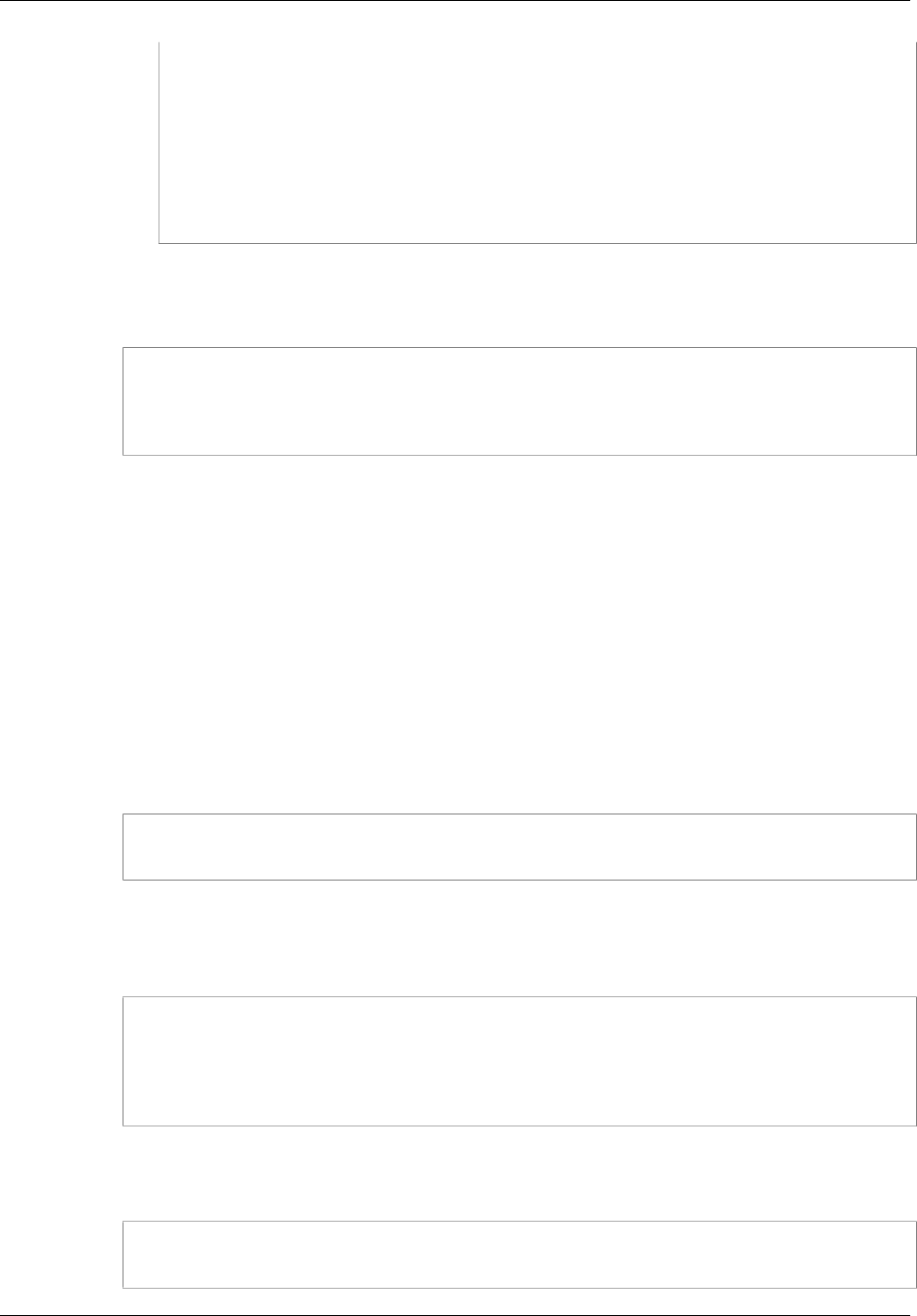

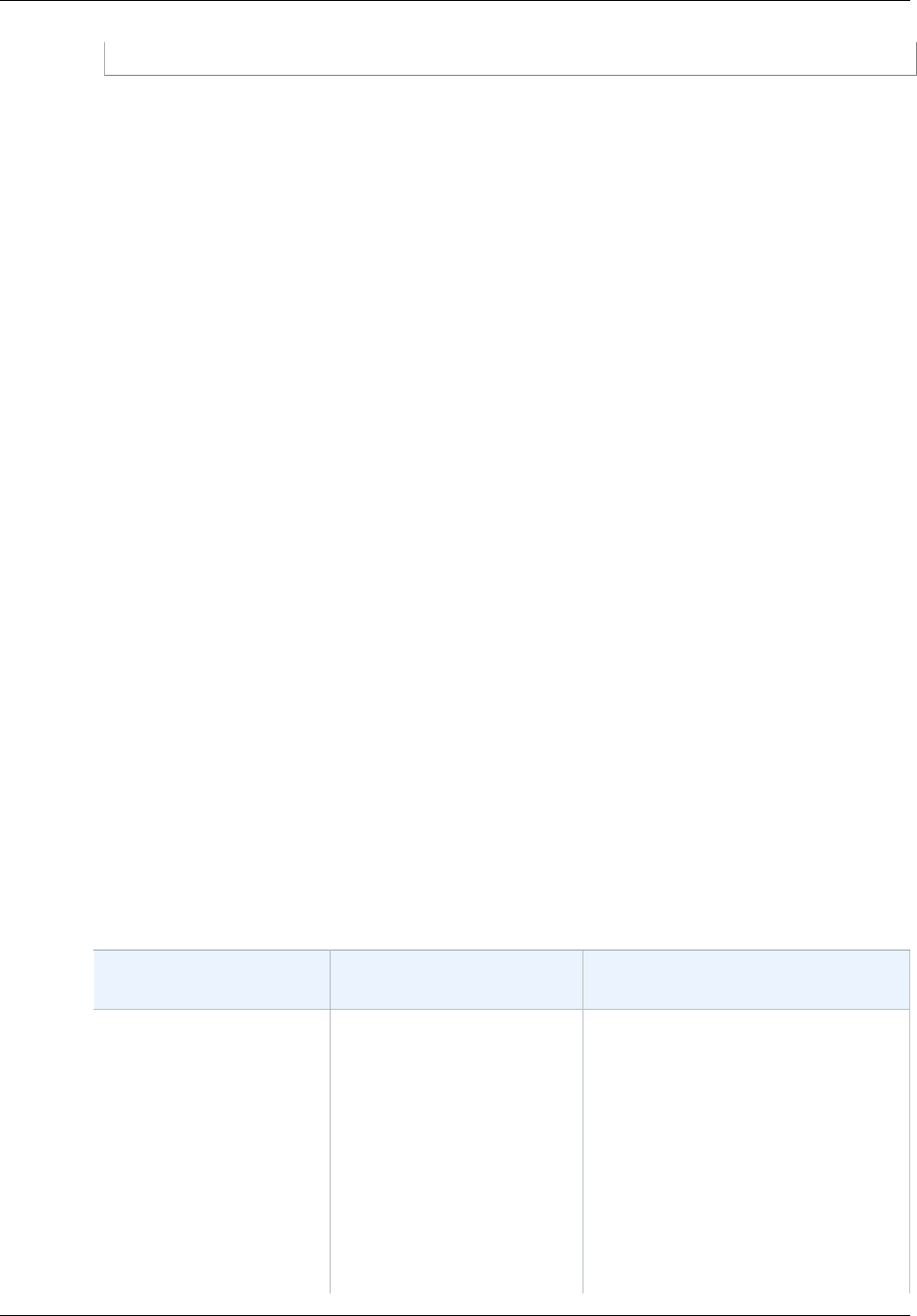

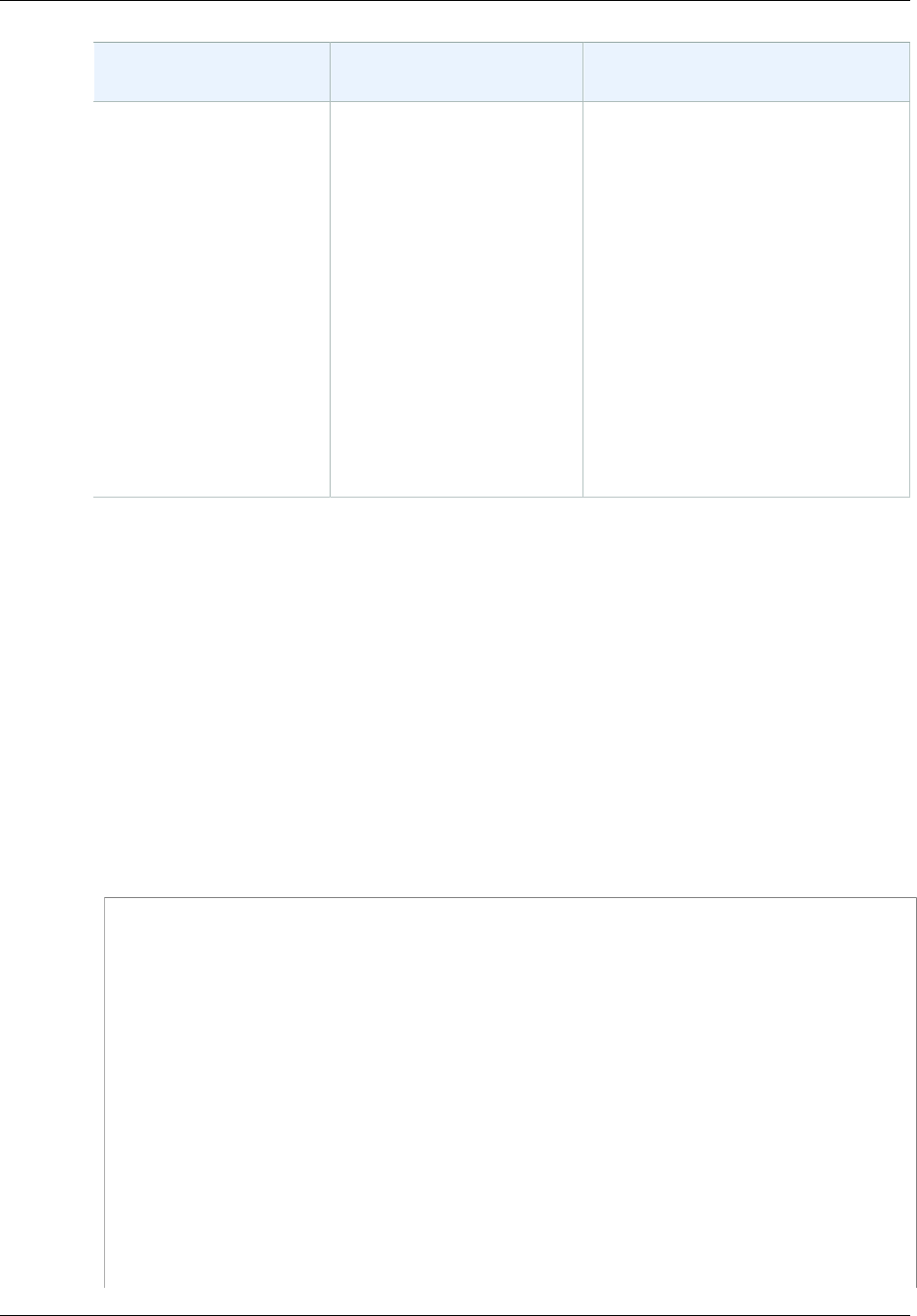

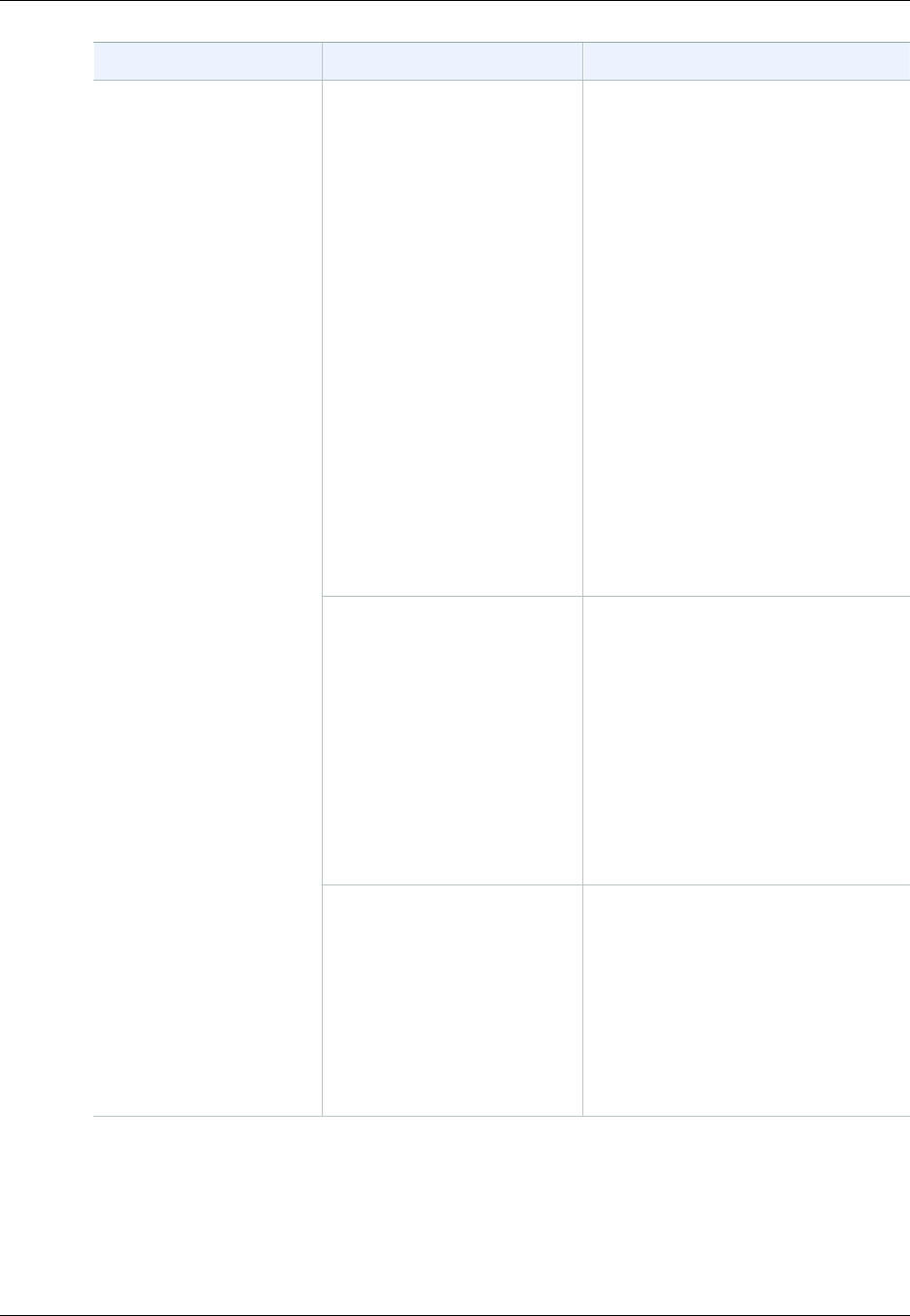

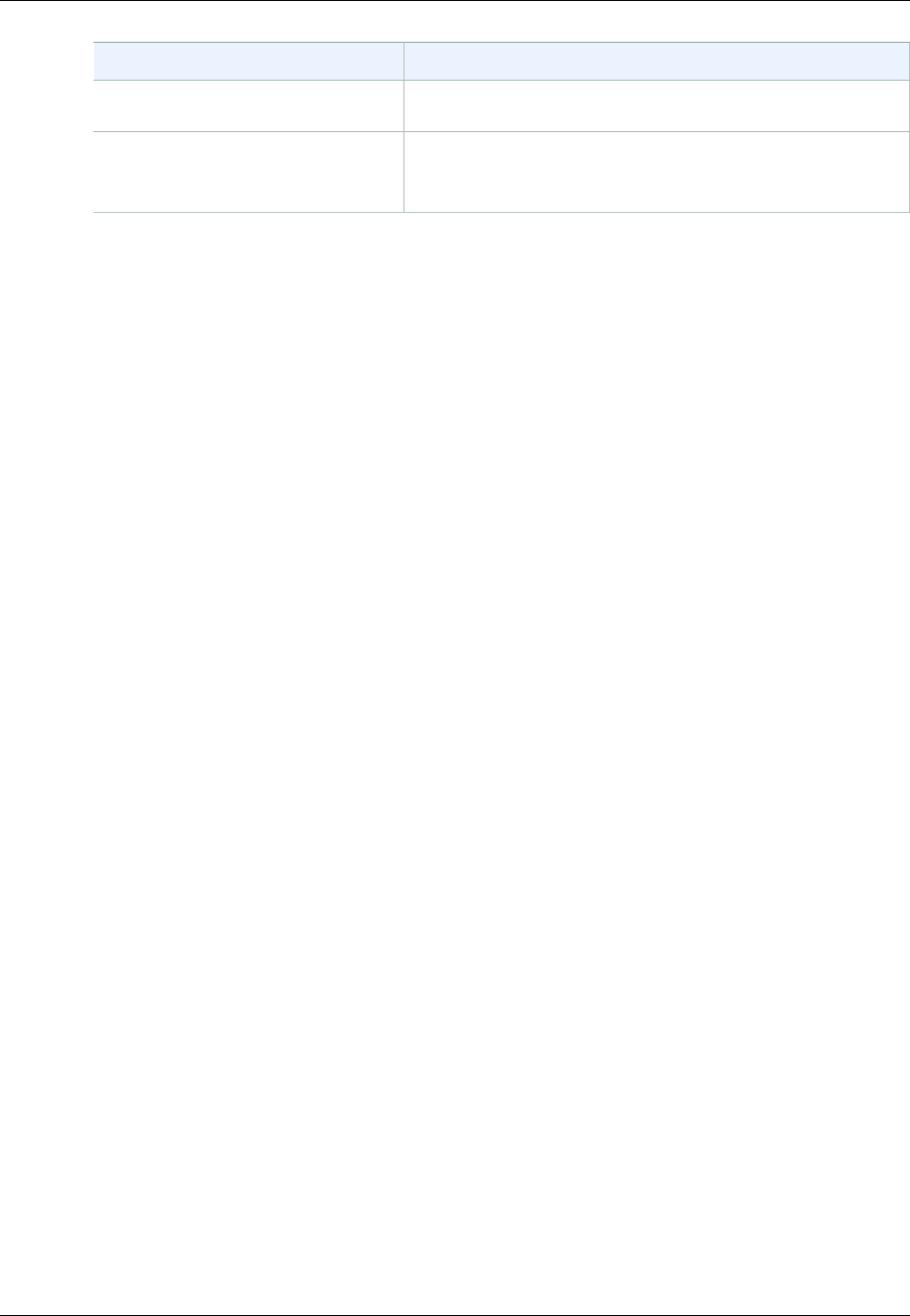

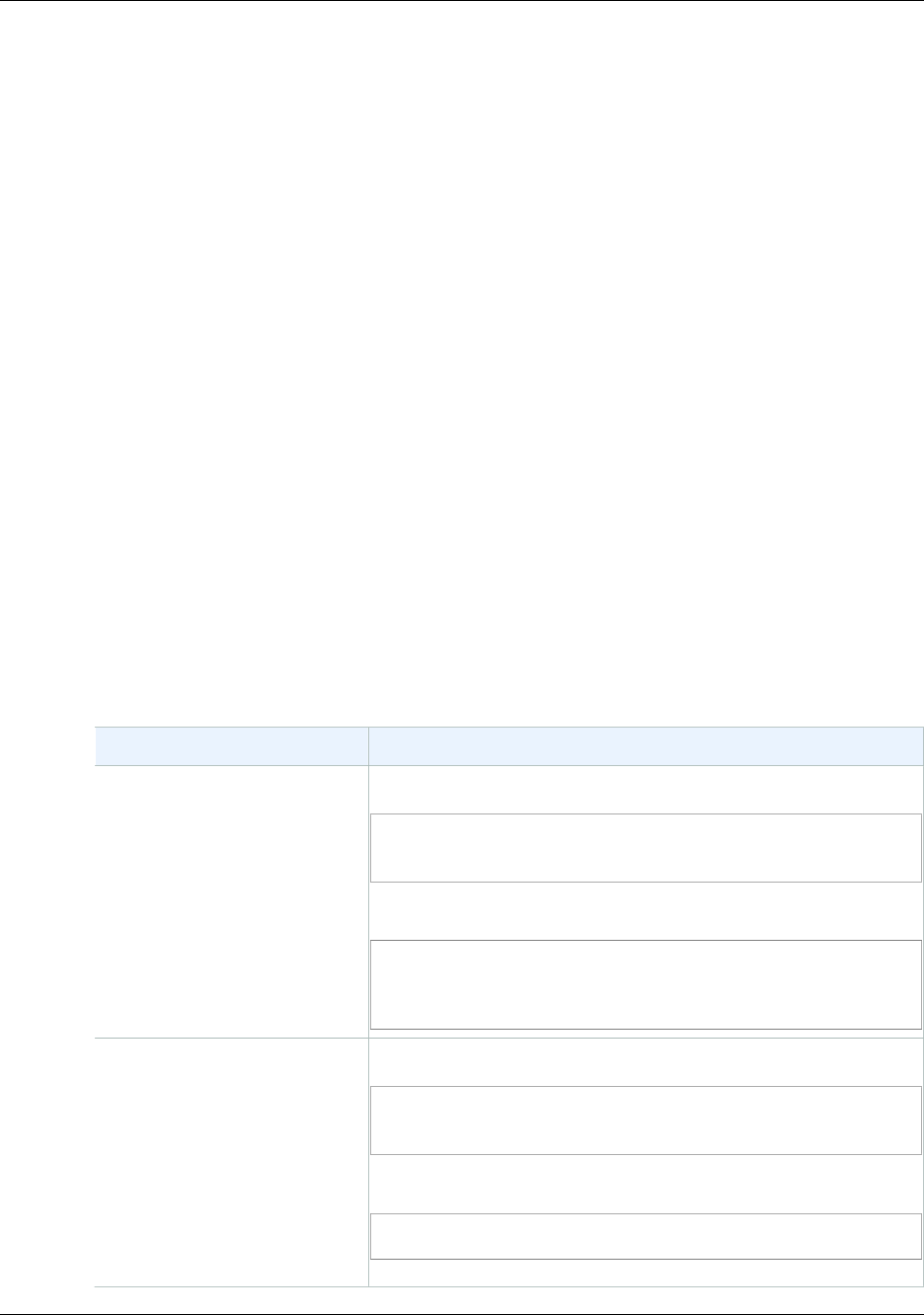

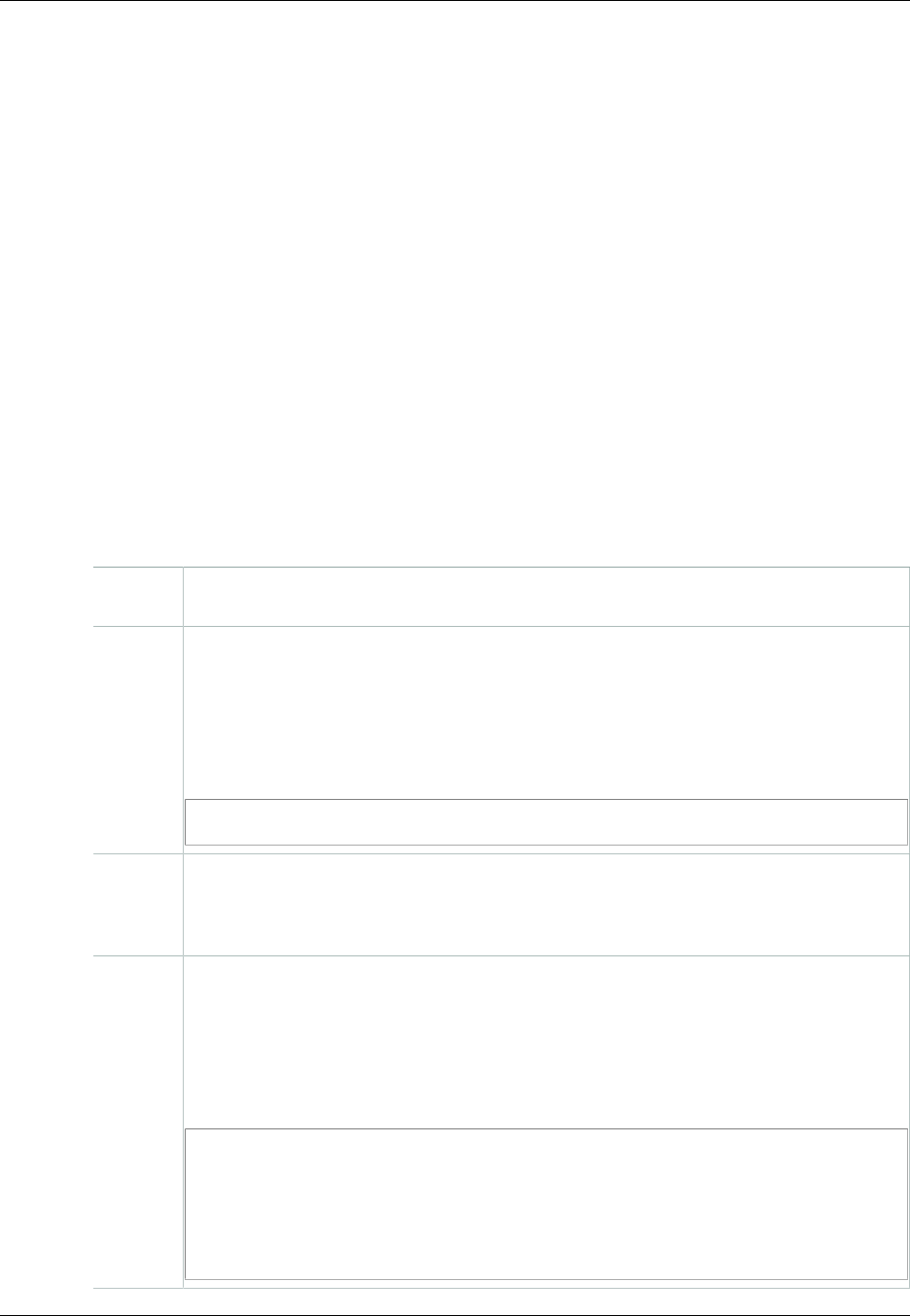

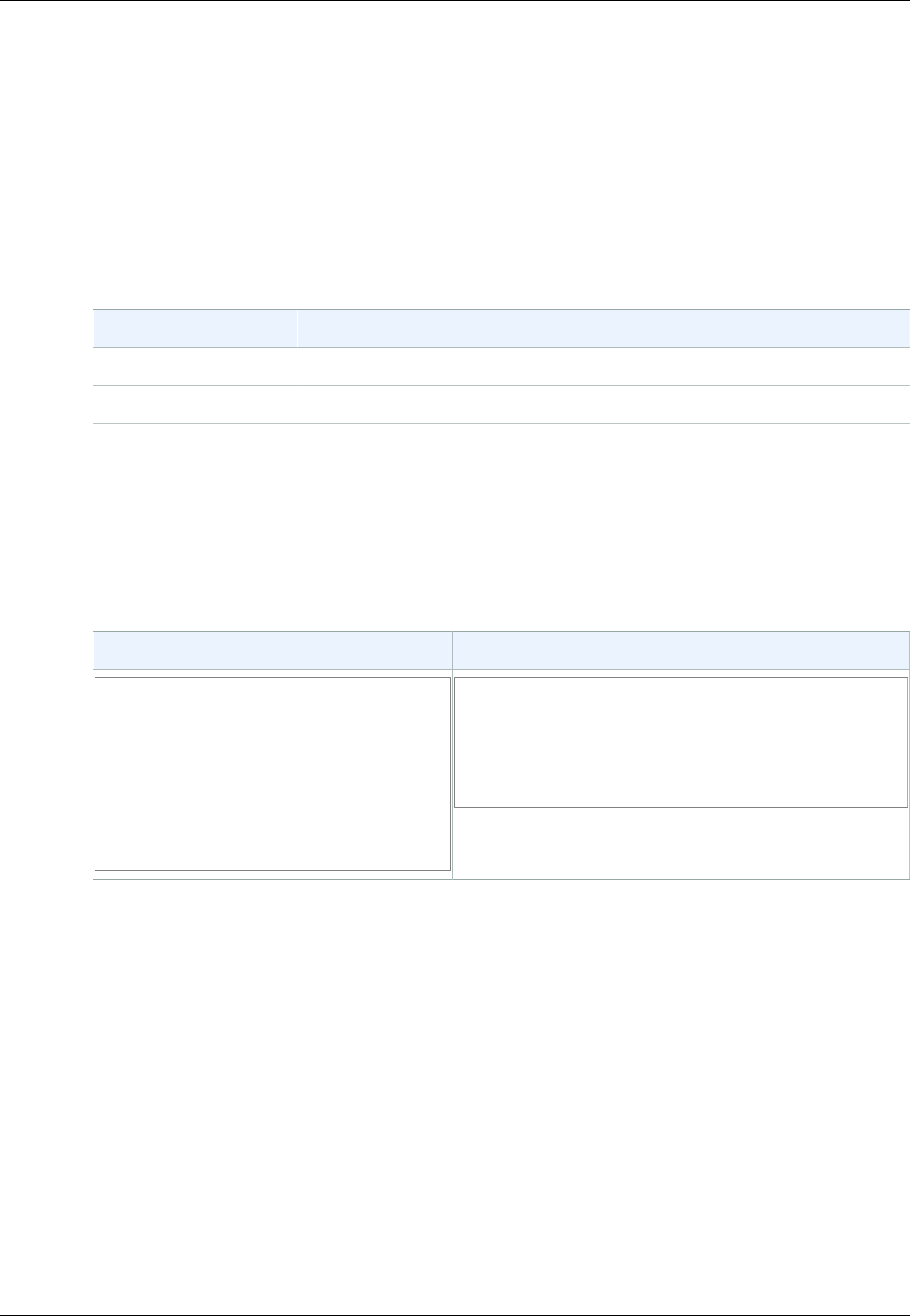

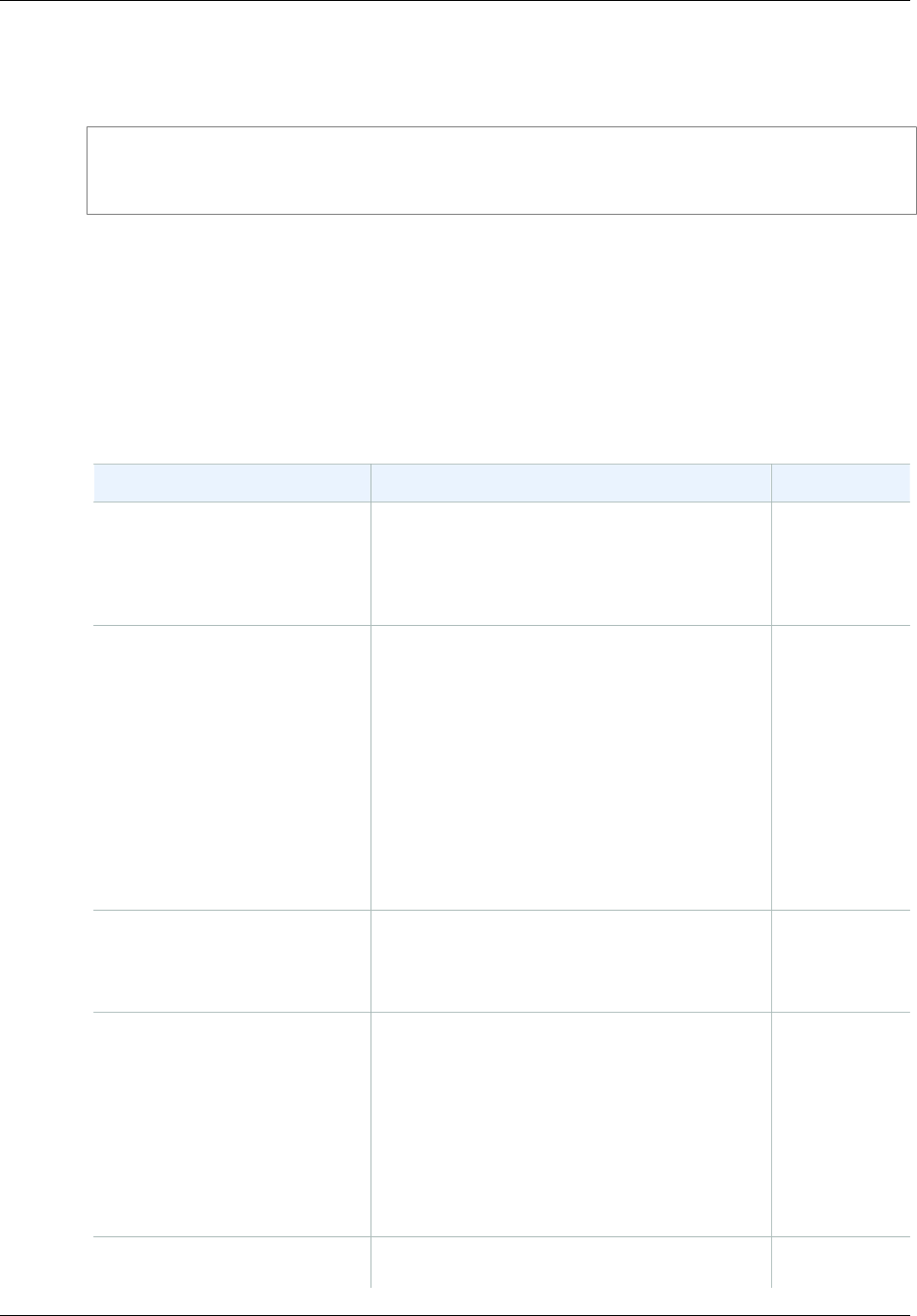

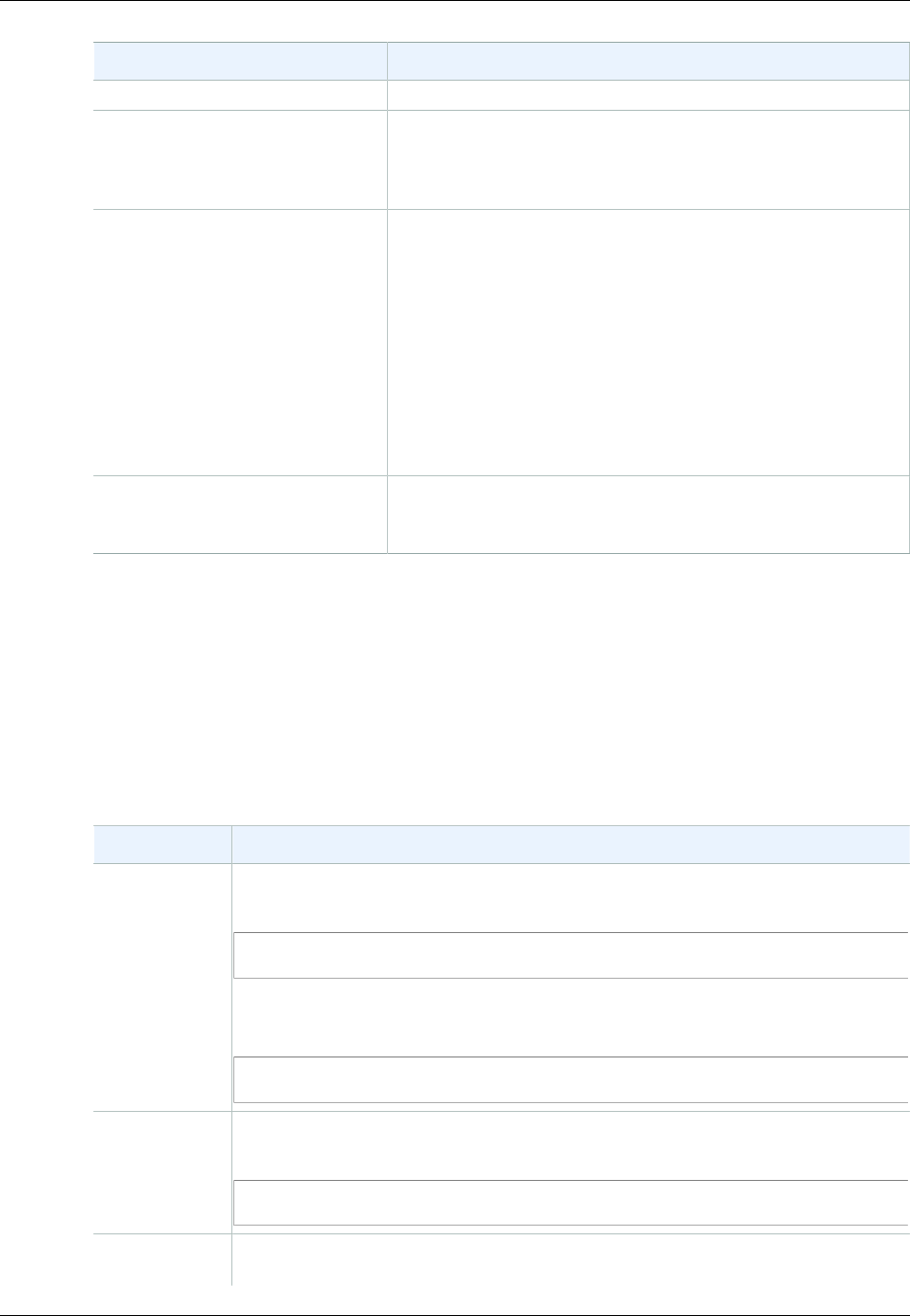

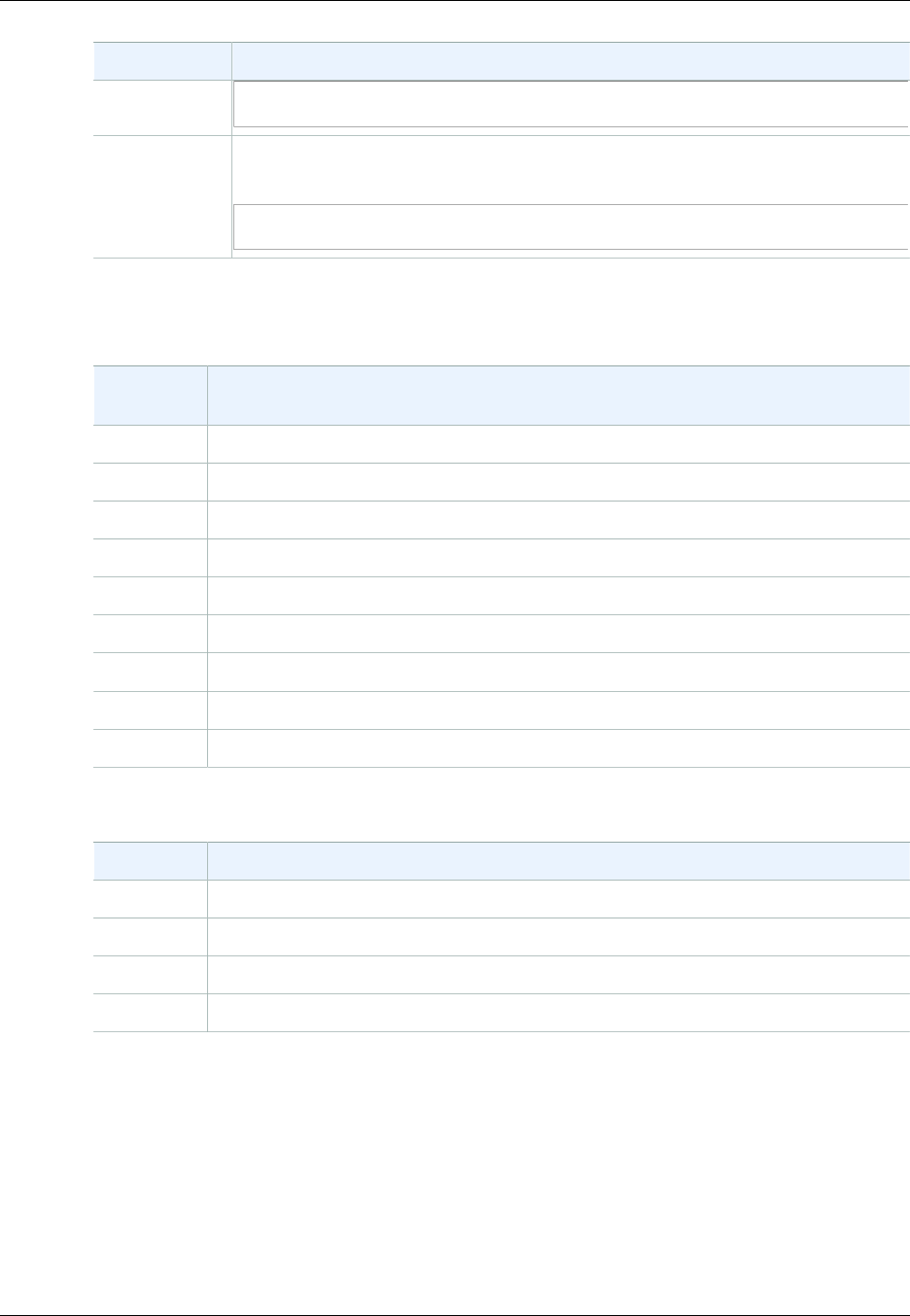

How Do I...?

Information Relevant Sections

General product overview and pricing Amazon S3

Get a quick hands-on introduction to

Amazon S3 Amazon Simple Storage Service Getting Started Guide

Learn about Amazon S3 key

terminology and concepts Introduction to Amazon S3 (p. 2)

How do I work with buckets? Working with Amazon S3 Buckets (p. 58)

How do I work with objects? Working with Amazon S3 Objects (p. 98)

How do I make requests? Making Requests (p. 11)

How do I manage access to my

resources? Managing Access Permissions to Your Amazon S3

Resources (p. 266)

API Version 2006-03-01

1

Amazon Simple Storage Service Developer Guide

Overview of Amazon S3 and This Guide

Introduction to Amazon S3

This introduction to Amazon Simple Storage Service is intended to give you a detailed summary of this

web service. After reading this section, you should have a good idea of what it offers and how it can fit

in with your business.

Topics

•Overview of Amazon S3 and This Guide (p. 2)

•Advantages to Amazon S3 (p. 2)

•Amazon S3 Concepts (p. 3)

•Features (p. 6)

•Amazon S3 Application Programming Interfaces (API) (p. 8)

•Paying for Amazon S3 (p. 9)

•Related Services (p. 9)

Overview of Amazon S3 and This Guide

Amazon S3 has a simple web services interface that you can use to store and retrieve any amount of

data, at any time, from anywhere on the web.

This guide describes how you send requests to create buckets, store and retrieve your objects,

and manage permissions on your resources. The guide also describes access control and the

authentication process. Access control defines who can access objects and buckets within Amazon S3,

and the type of access (e.g., READ and WRITE). The authentication process verifies the identity of a

user who is trying to access Amazon Web Services (AWS).

Advantages to Amazon S3

Amazon S3 is intentionally built with a minimal feature set that focuses on simplicity and robustness.

Following are some of advantages of the Amazon S3 service:

•Create Buckets – Create and name a bucket that stores data. Buckets are the fundamental

container in Amazon S3 for data storage.

•Store data in Buckets – Store an infinite amount of data in a bucket. Upload as many objects as

you like into an Amazon S3 bucket. Each object can contain up to 5 TB of data. Each object is stored

and retrieved using a unique developer-assigned key.

API Version 2006-03-01

2

Amazon Simple Storage Service Developer Guide

Amazon S3 Concepts

•Download data – Download your data or enable others to do so. Download your data any time you

like or allow others to do the same.

•Permissions – Grant or deny access to others who want to upload or download data into your

Amazon S3 bucket. Grant upload and download permissions to three types of users. Authentication

mechanisms can help keep data secure from unauthorized access.

•Standard interfaces – Use standards-based REST and SOAP interfaces designed to work with any

Internet-development toolkit.

Note

SOAP support over HTTP is deprecated, but it is still available over HTTPS. New Amazon

S3 features will not be supported for SOAP. We recommend that you use either the REST

API or the AWS SDKs.

Amazon S3 Concepts

Topics

•Buckets (p. 3)

•Objects (p. 3)

•Keys (p. 4)

•Regions (p. 4)

•Amazon S3 Data Consistency Model (p. 4)

This section describes key concepts and terminology you need to understand to use Amazon S3

effectively. They are presented in the order you will most likely encounter them.

Buckets

A bucket is a container for objects stored in Amazon S3. Every object is contained in a bucket. For

example, if the object named photos/puppy.jpg is stored in the johnsmith bucket, then it is

addressable using the URL http://johnsmith.s3.amazonaws.com/photos/puppy.jpg

Buckets serve several purposes: they organize the Amazon S3 namespace at the highest level, they

identify the account responsible for storage and data transfer charges, they play a role in access

control, and they serve as the unit of aggregation for usage reporting.

You can configure buckets so that they are created in a specific region. For more information, see

Buckets and Regions (p. 60). You can also configure a bucket so that every time an object is added

to it, Amazon S3 generates a unique version ID and assigns it to the object. For more information, see

Versioning (p. 423).

For more information about buckets, see Working with Amazon S3 Buckets (p. 58).

Objects

Objects are the fundamental entities stored in Amazon S3. Objects consist of object data and

metadata. The data portion is opaque to Amazon S3. The metadata is a set of name-value pairs

that describe the object. These include some default metadata, such as the date last modified, and

standard HTTP metadata, such as Content-Type. You can also specify custom metadata at the time

the object is stored.

An object is uniquely identified within a bucket by a key (name) and a version ID. For more information,

see Keys (p. 4) and Versioning (p. 423).

API Version 2006-03-01

3

Amazon Simple Storage Service Developer Guide

Keys

Keys

A key is the unique identifier for an object within a bucket. Every object in a bucket has exactly

one key. Because the combination of a bucket, key, and version ID uniquely identify each object,

Amazon S3 can be thought of as a basic data map between "bucket + key + version" and the

object itself. Every object in Amazon S3 can be uniquely addressed through the combination of

the web service endpoint, bucket name, key, and optionally, a version. For example, in the URL

http://doc.s3.amazonaws.com/2006-03-01/AmazonS3.wsdl, "doc" is the name of the bucket and

"2006-03-01/AmazonS3.wsdl" is the key.

Regions

You can choose the geographical region where Amazon S3 will store the buckets you create. You

might choose a region to optimize latency, minimize costs, or address regulatory requirements.

Amazon S3 currently supports the following regions:

•US East (N. Virginia) Region Uses Amazon S3 servers in Northern Virginia

•US West (N. California) Region Uses Amazon S3 servers in Northern California

•US West (Oregon) Region Uses Amazon S3 servers in Oregon

•Asia Pacific (Mumbai) Region Uses Amazon S3 servers in Mumbai

•Asia Pacific (Seoul) Region Uses Amazon S3 servers in Seoul

•Asia Pacific (Singapore) Region Uses Amazon S3 servers in Singapore

•Asia Pacific (Sydney) Region Uses Amazon S3 servers in Sydney

•Asia Pacific (Tokyo) Region Uses Amazon S3 servers in Tokyo

•EU (Frankfurt) Region Uses Amazon S3 servers in Frankfurt

•EU (Ireland) Region Uses Amazon S3 servers in Ireland

•South America (São Paulo) Region Uses Amazon S3 servers in Sao Paulo

Objects stored in a region never leave the region unless you explicitly transfer them to another region.

For example, objects stored in the EU (Ireland) region never leave it. For more information about

Amazon S3 regions and endpoints, go to Regions and Endpoints in the AWS General Reference.

Amazon S3 Data Consistency Model

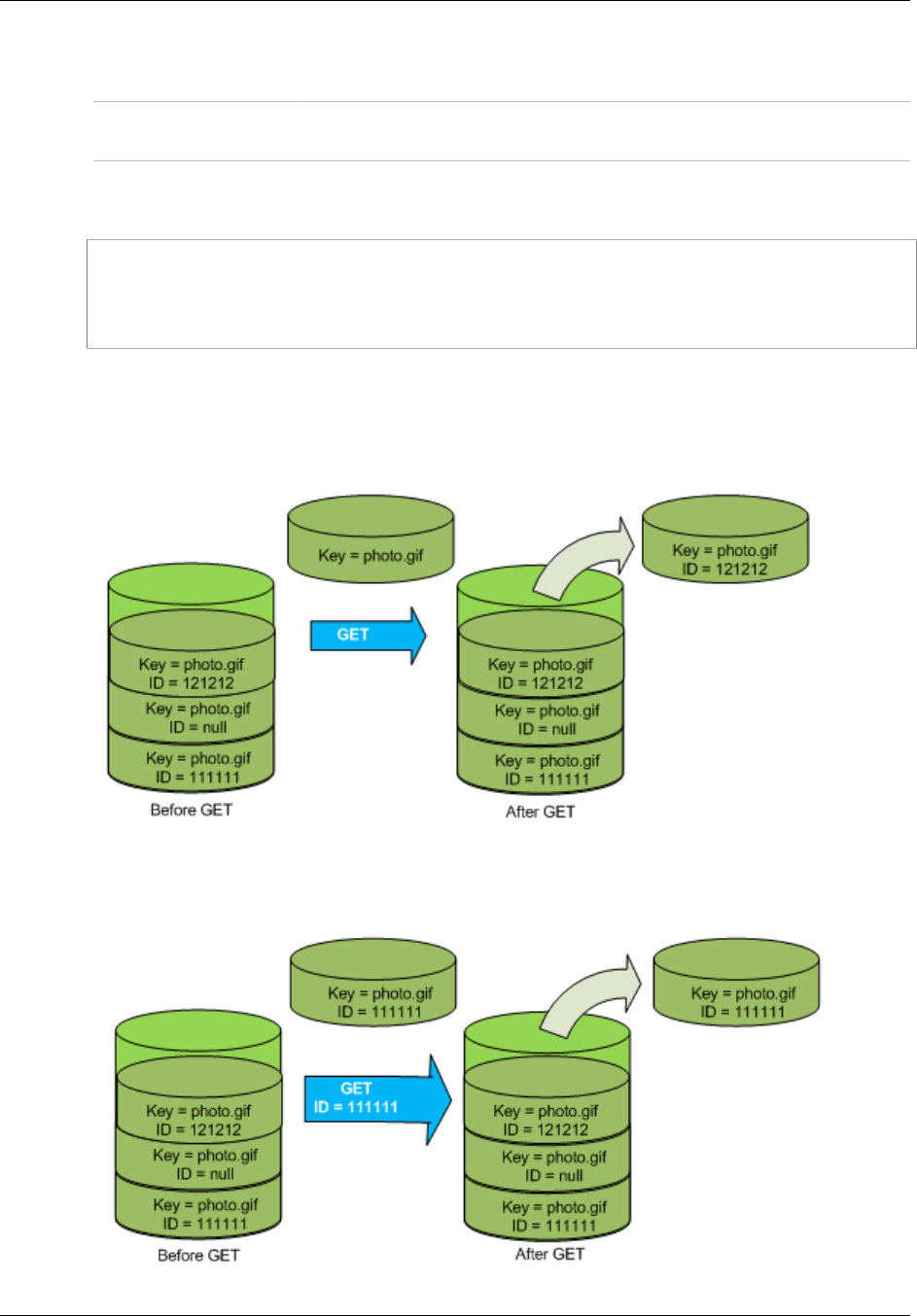

Amazon S3 provides read-after-write consistency for PUTS of new objects in your S3 bucket in all

regions with one caveat. The caveat is that if you make a HEAD or GET request to the key name (to

find if the object exists) before creating the object, Amazon S3 provides eventual consistency for read-

after-write.

Amazon S3 offers eventual consistency for overwrite PUTS and DELETES in all regions.

Updates to a single key are atomic. For example, if you PUT to an existing key, a subsequent read

might return the old data or the updated data, but it will never write corrupted or partial data.

Amazon S3 achieves high availability by replicating data across multiple servers within Amazon's data

centers. If a PUT request is successful, your data is safely stored. However, information about the

changes must replicate across Amazon S3, which can take some time, and so you might observe the

following behaviors:

• A process writes a new object to Amazon S3 and immediately lists keys within its bucket. Until the

change is fully propagated, the object might not appear in the list.

• A process replaces an existing object and immediately attempts to read it. Until the change is fully

propagated, Amazon S3 might return the prior data.

API Version 2006-03-01

4

Amazon Simple Storage Service Developer Guide

Amazon S3 Data Consistency Model

• A process deletes an existing object and immediately attempts to read it. Until the deletion is fully

propagated, Amazon S3 might return the deleted data.

• A process deletes an existing object and immediately lists keys within its bucket. Until the deletion is

fully propagated, Amazon S3 might list the deleted object.

Note

Amazon S3 does not currently support object locking. If two PUT requests are simultaneously

made to the same key, the request with the latest time stamp wins. If this is an issue, you will

need to build an object-locking mechanism into your application.

Updates are key-based; there is no way to make atomic updates across keys. For example,

you cannot make the update of one key dependent on the update of another key unless you

design this functionality into your application.

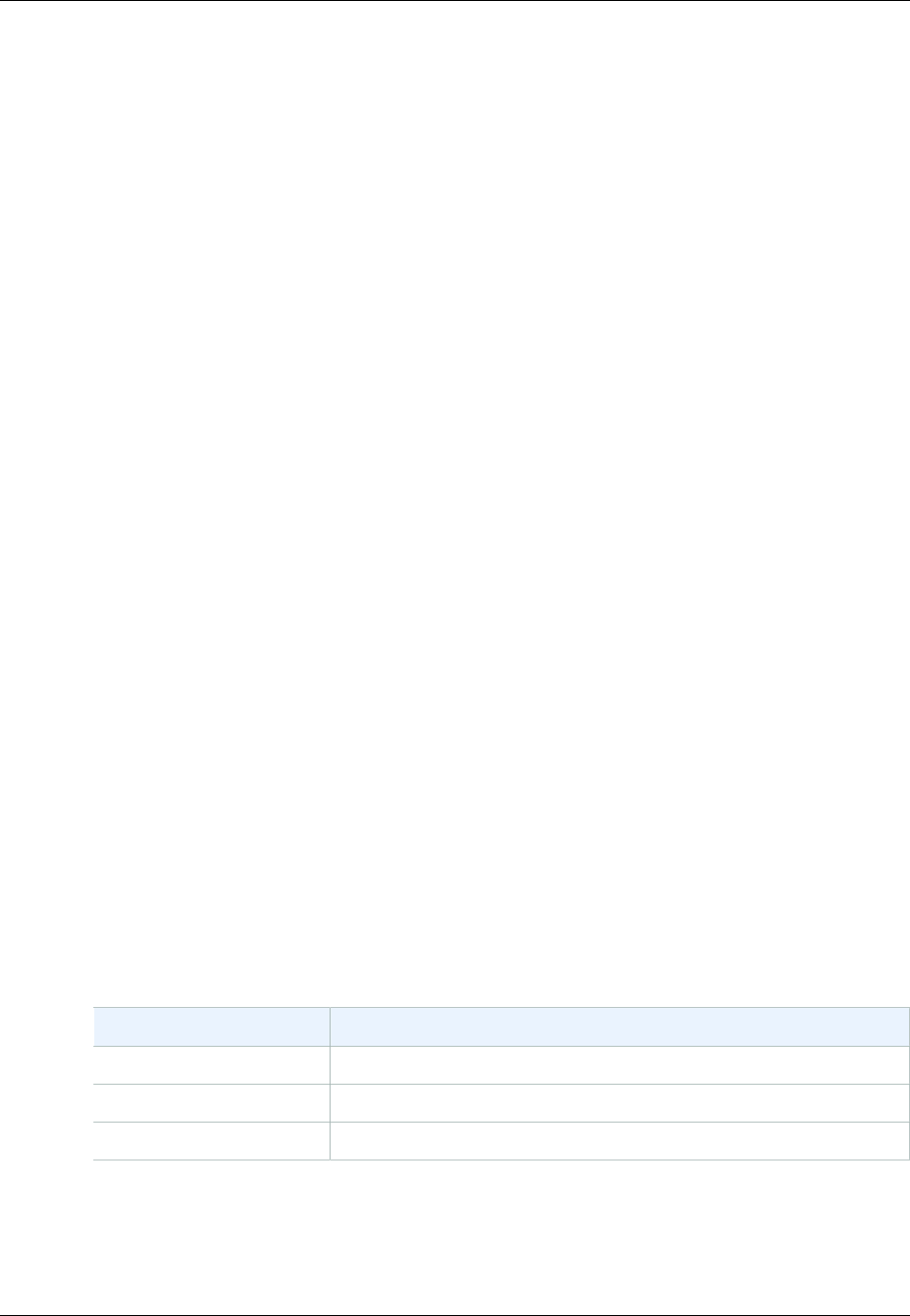

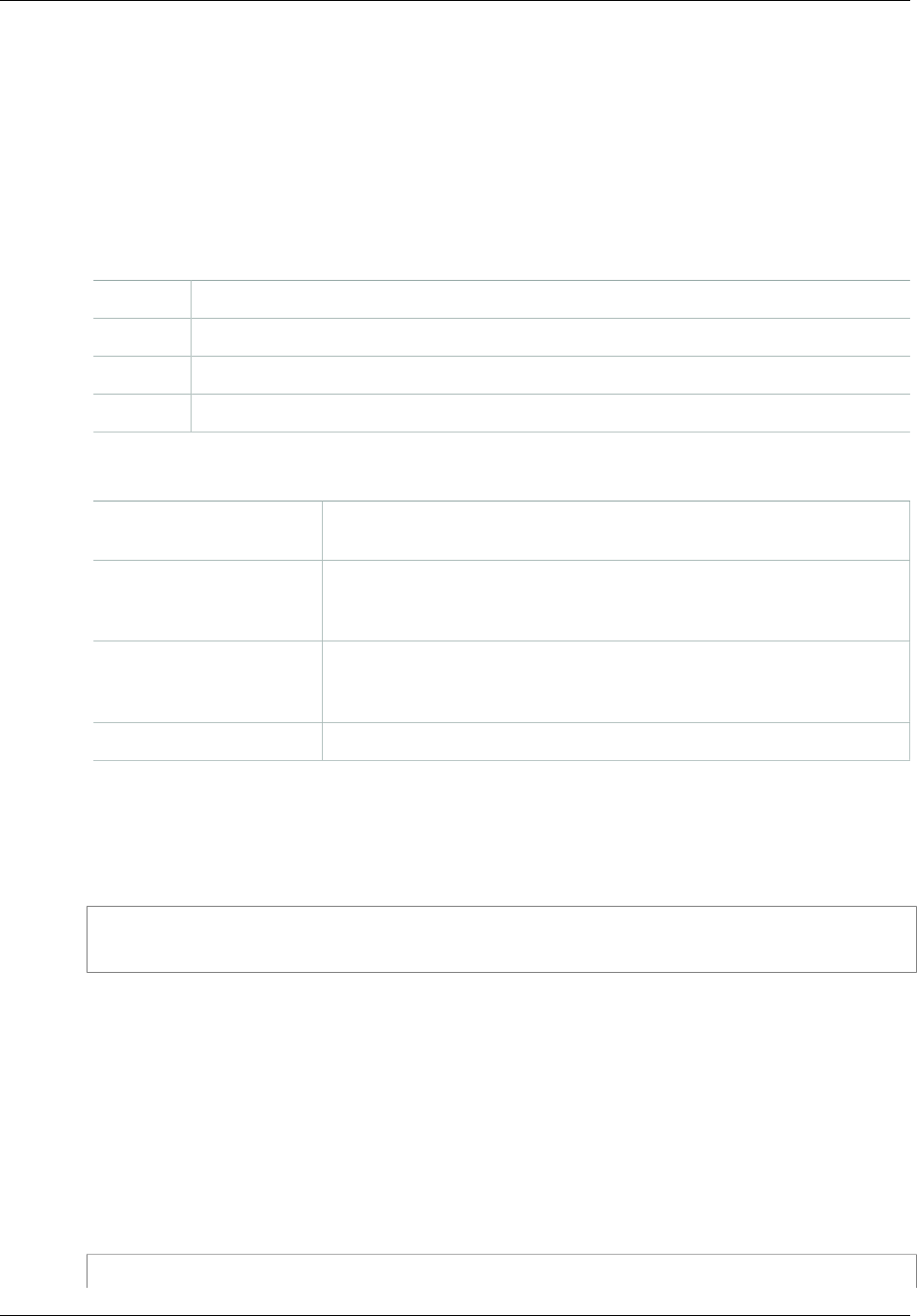

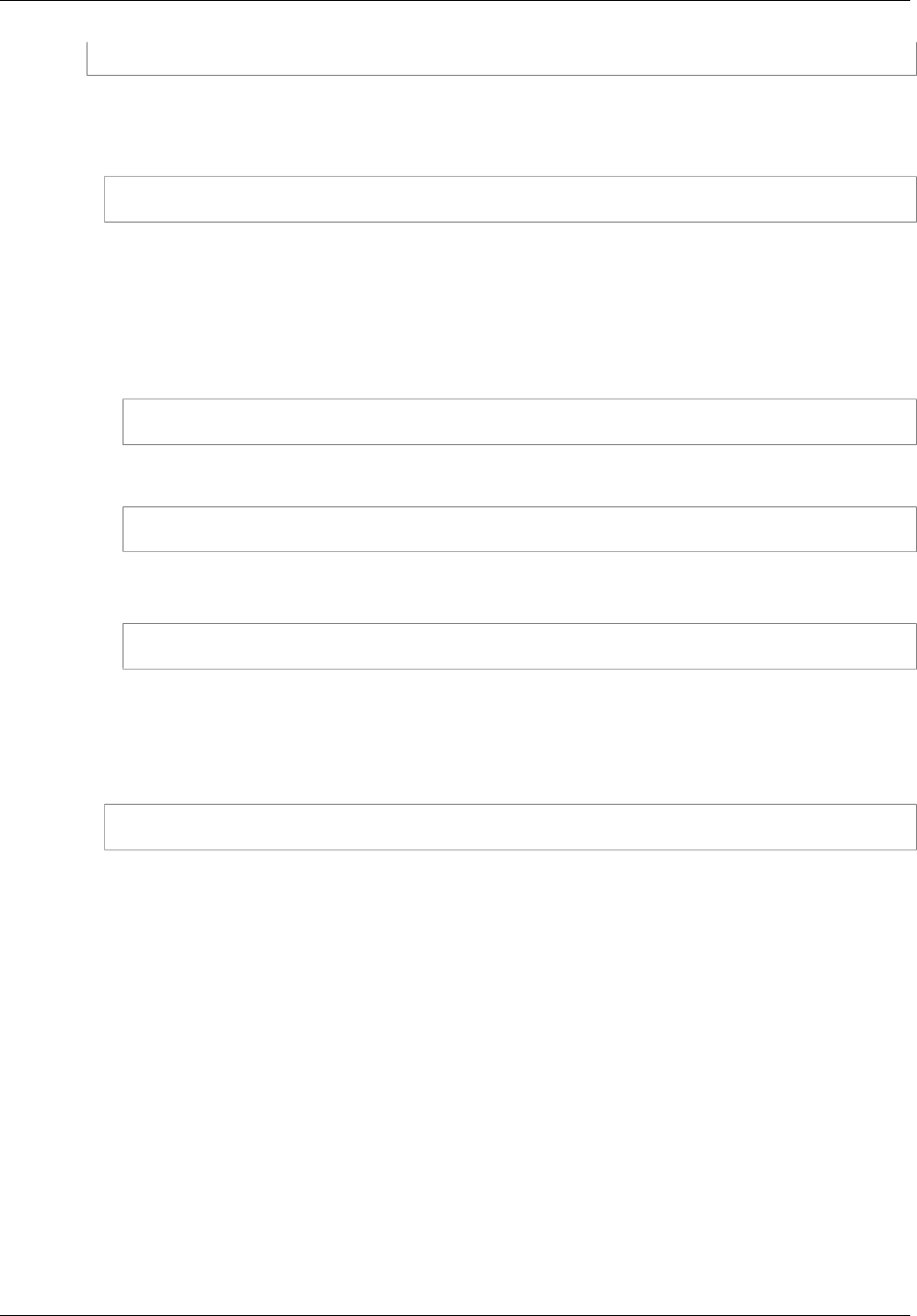

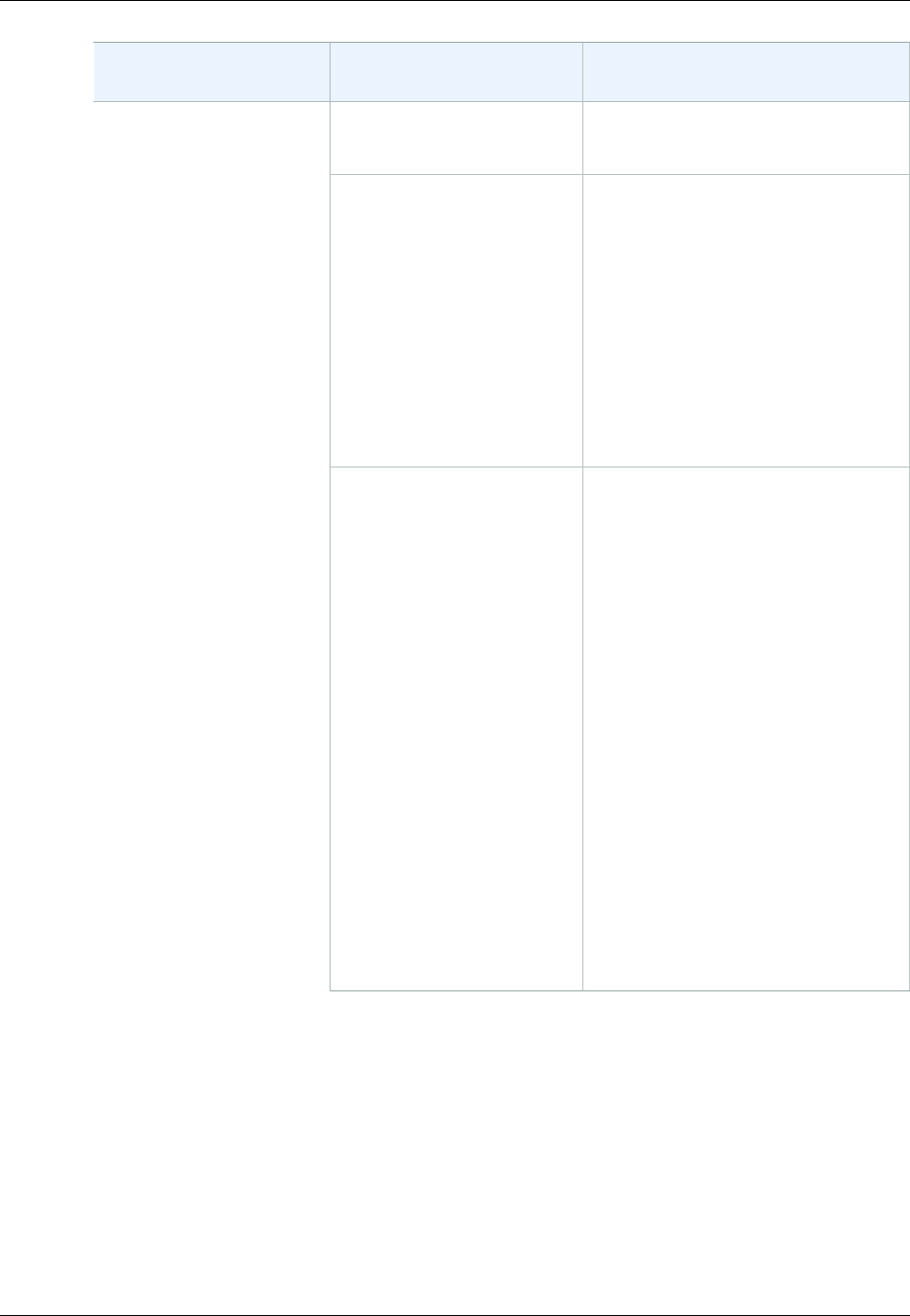

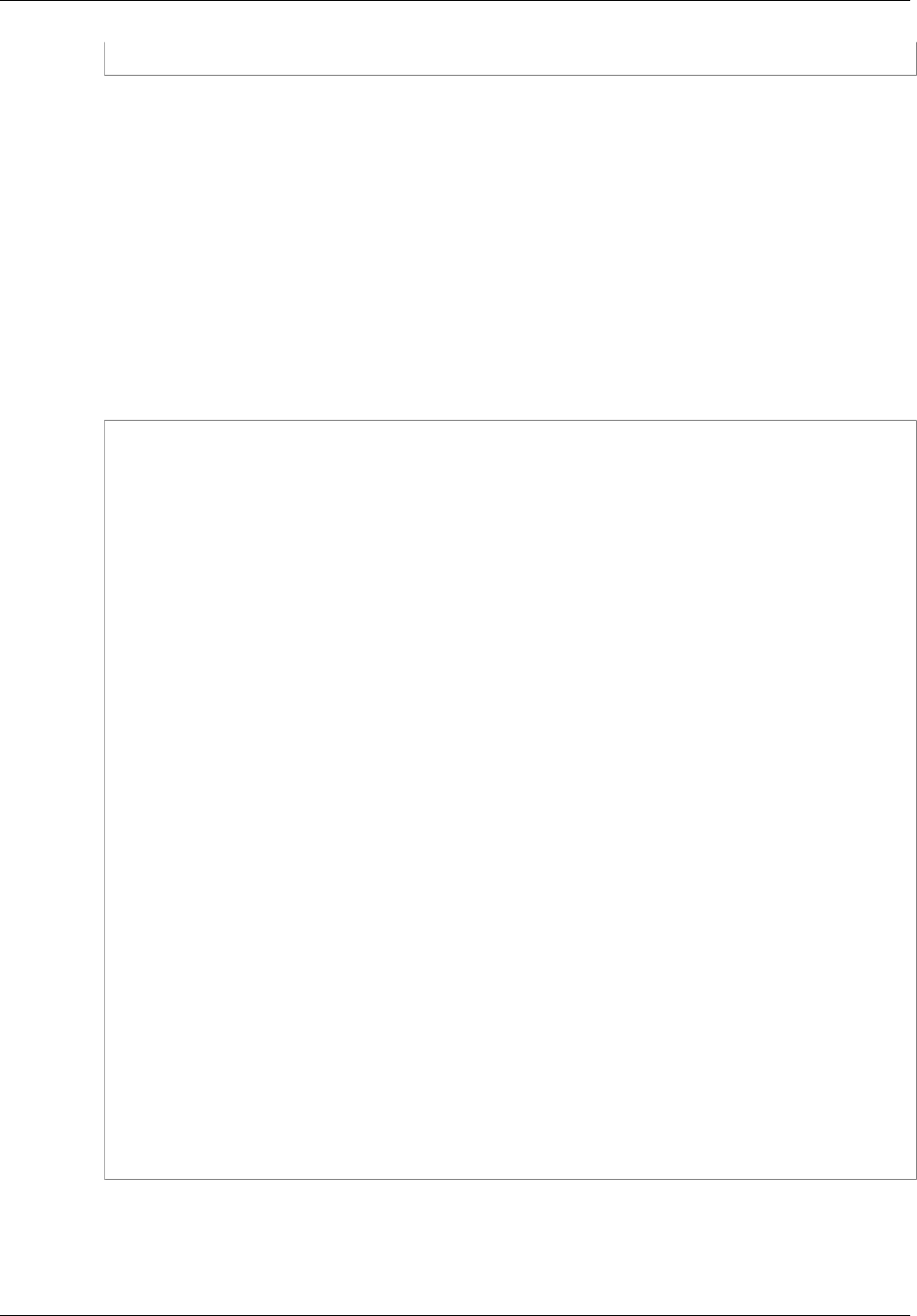

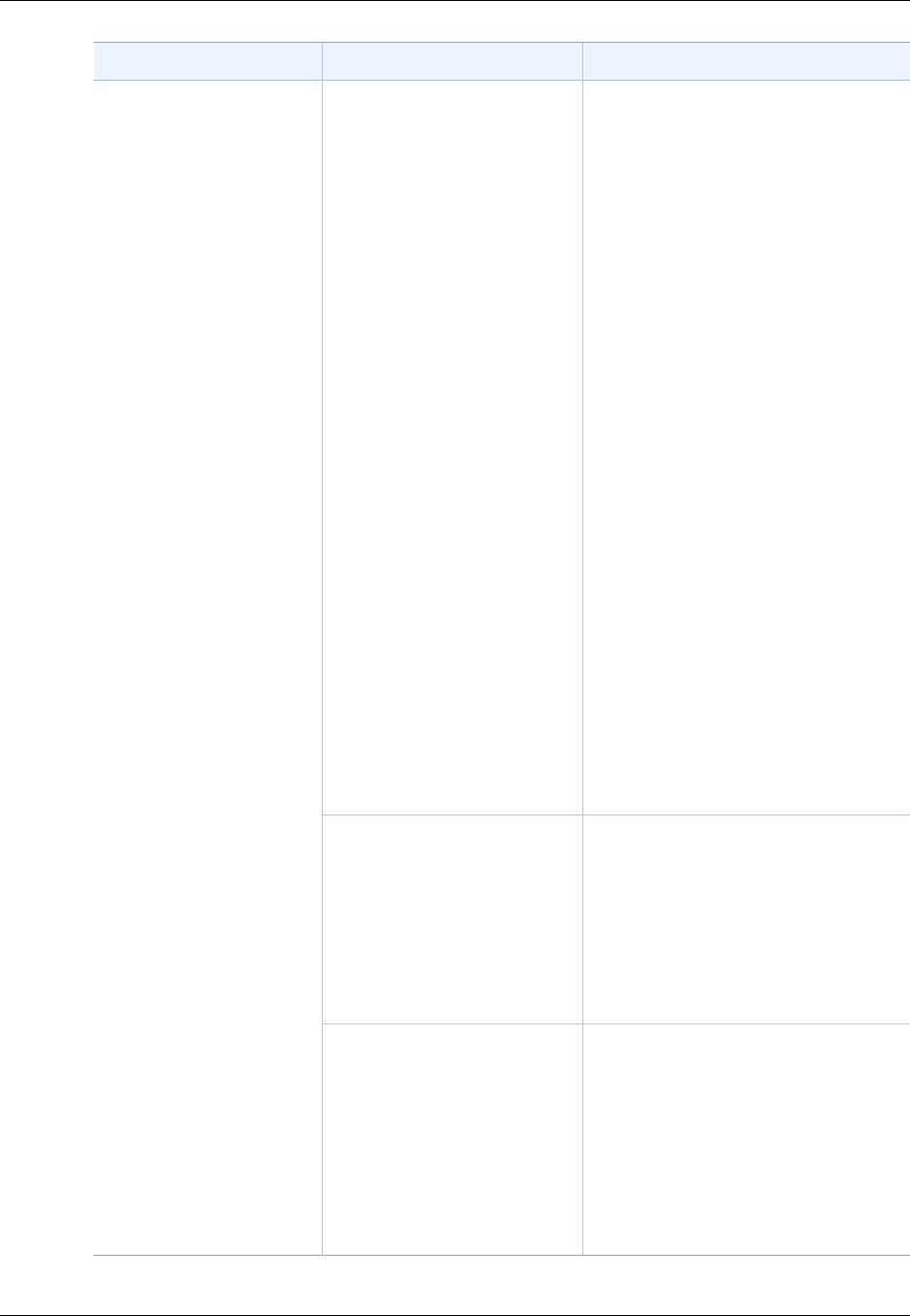

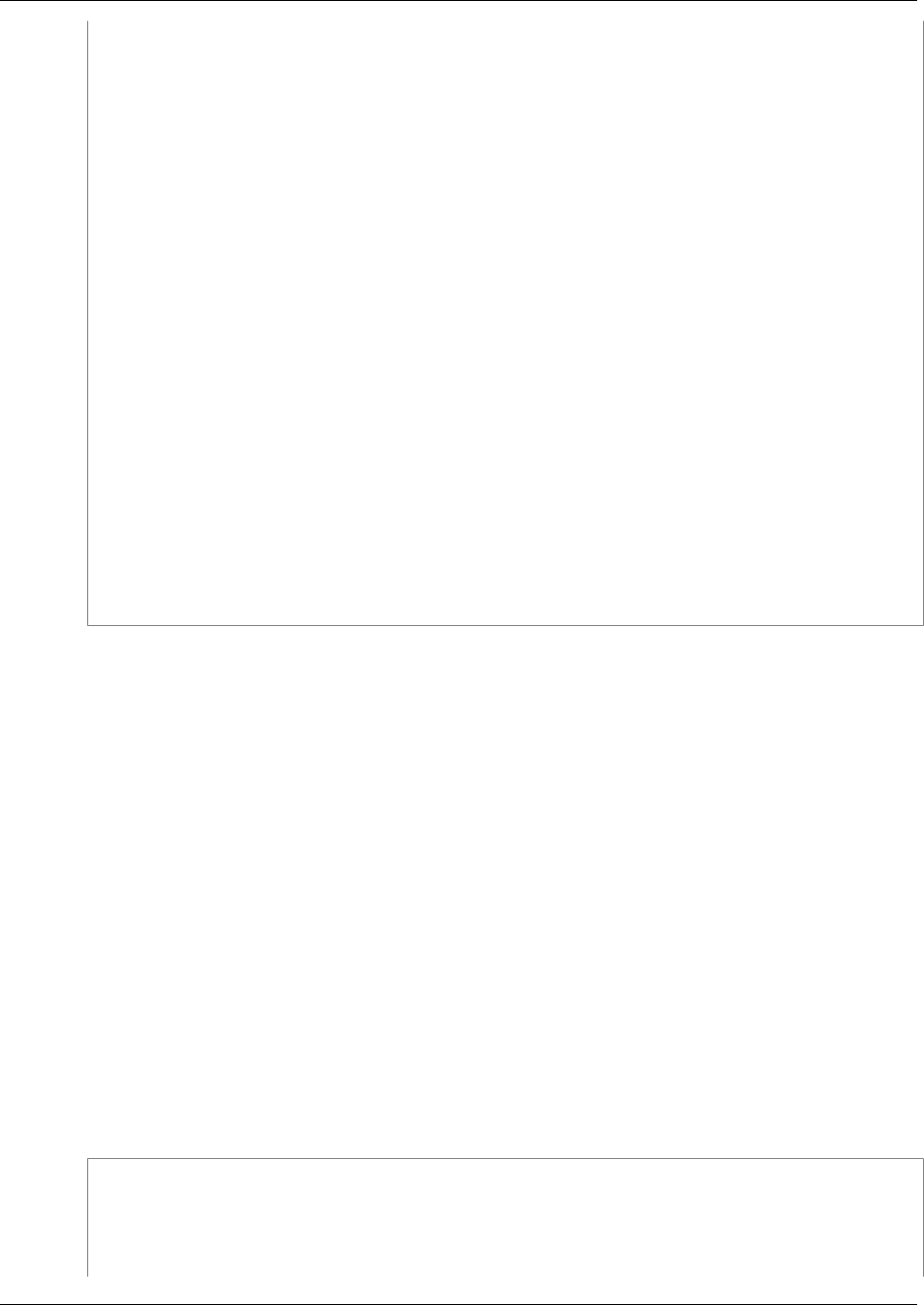

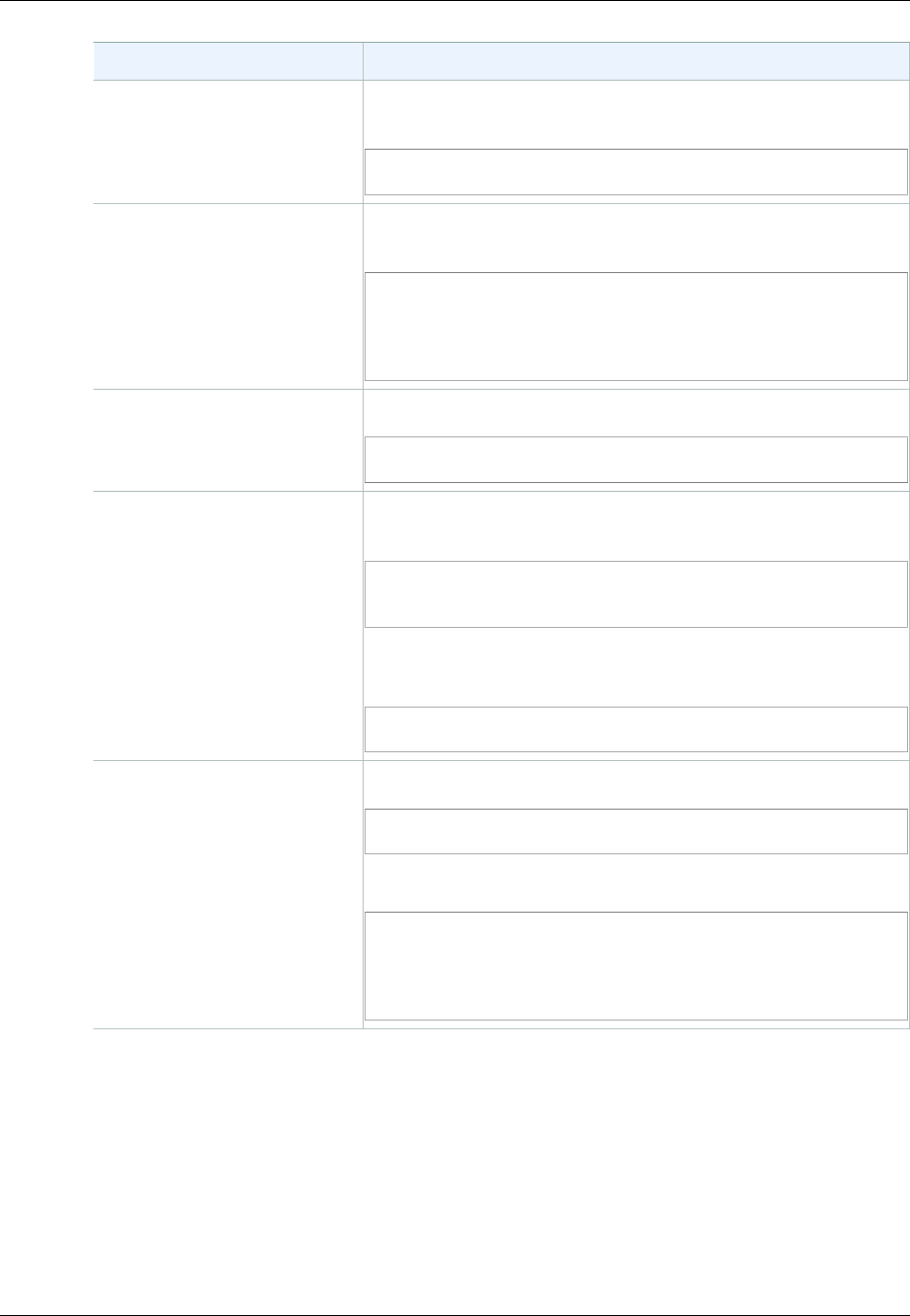

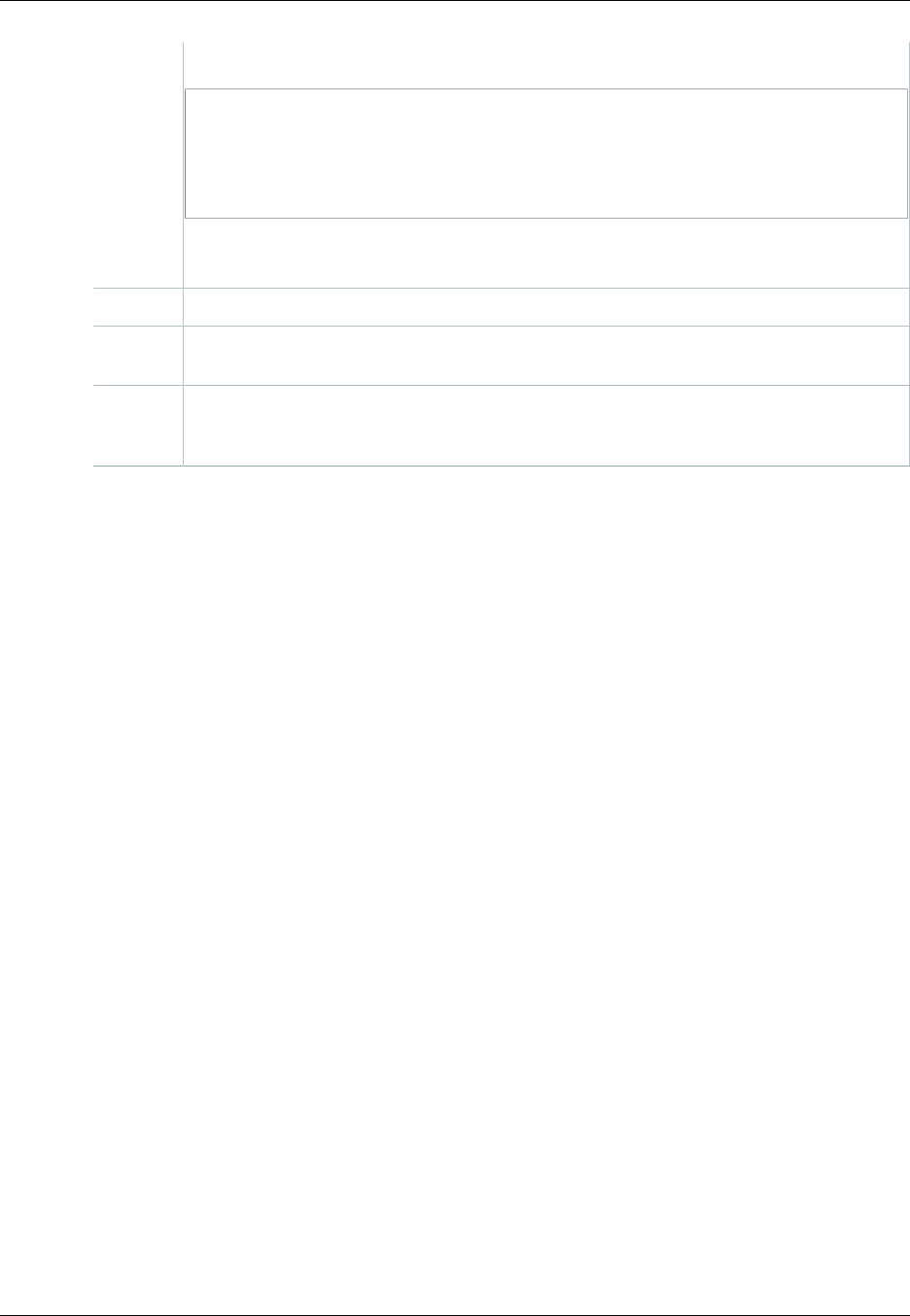

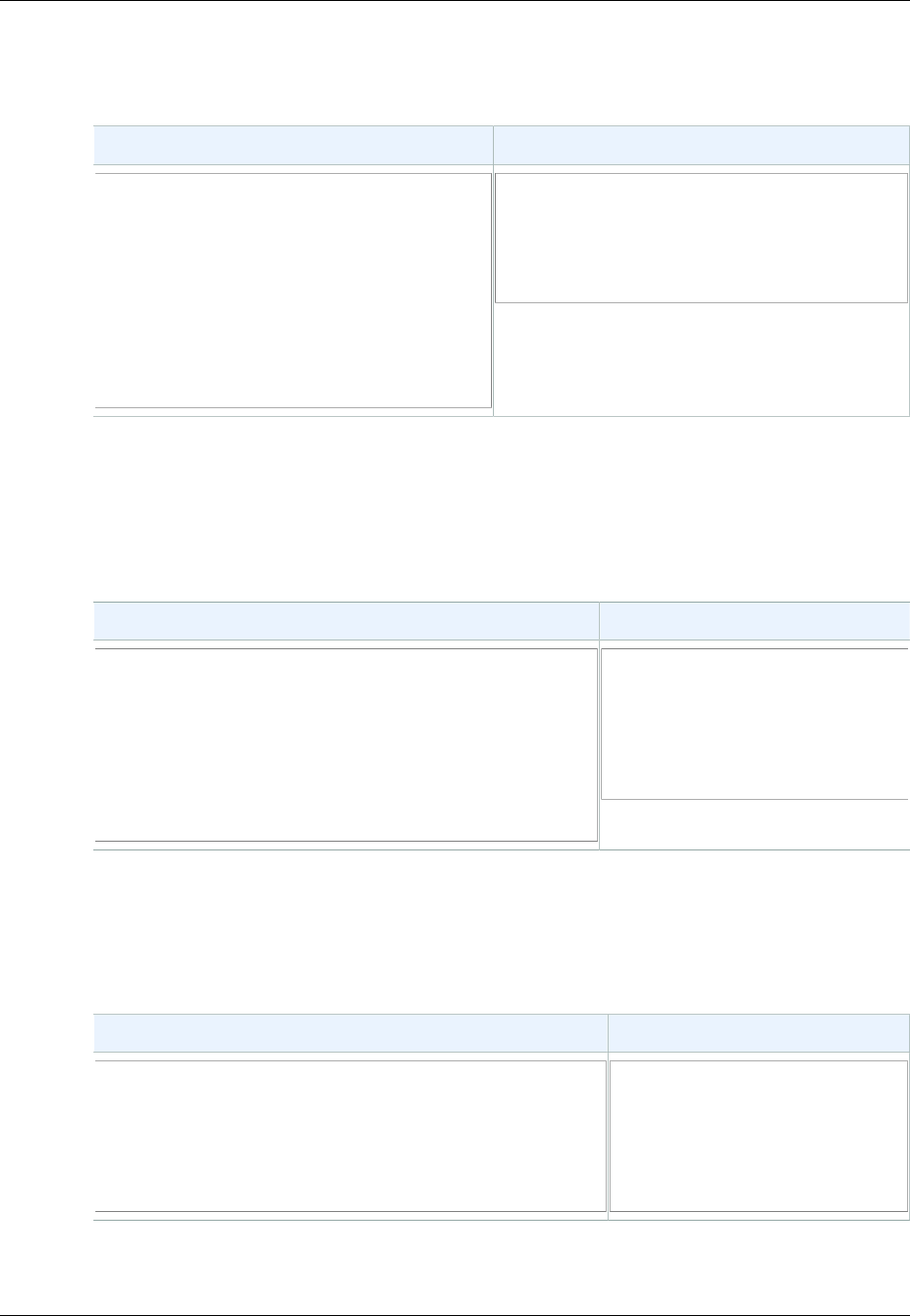

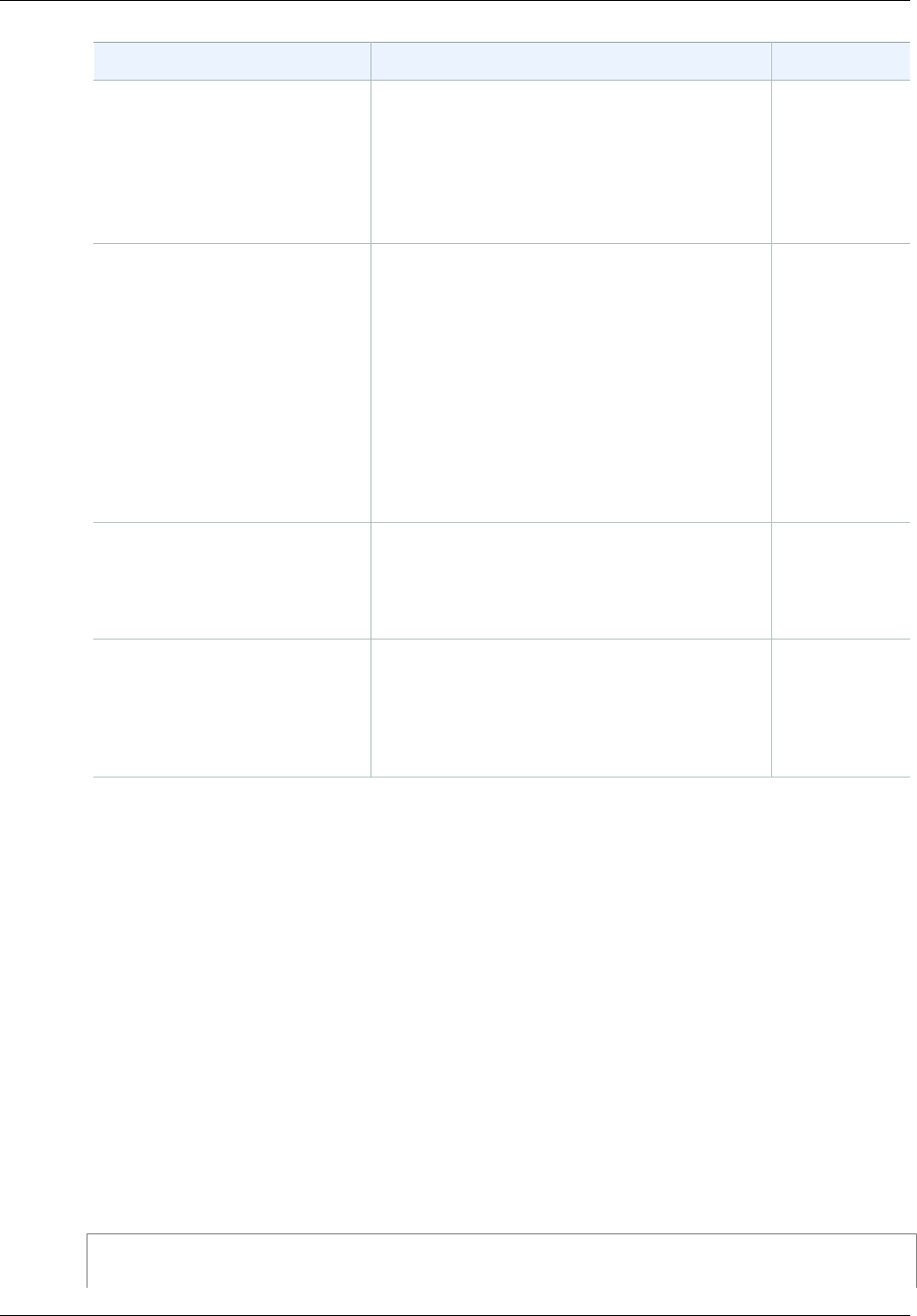

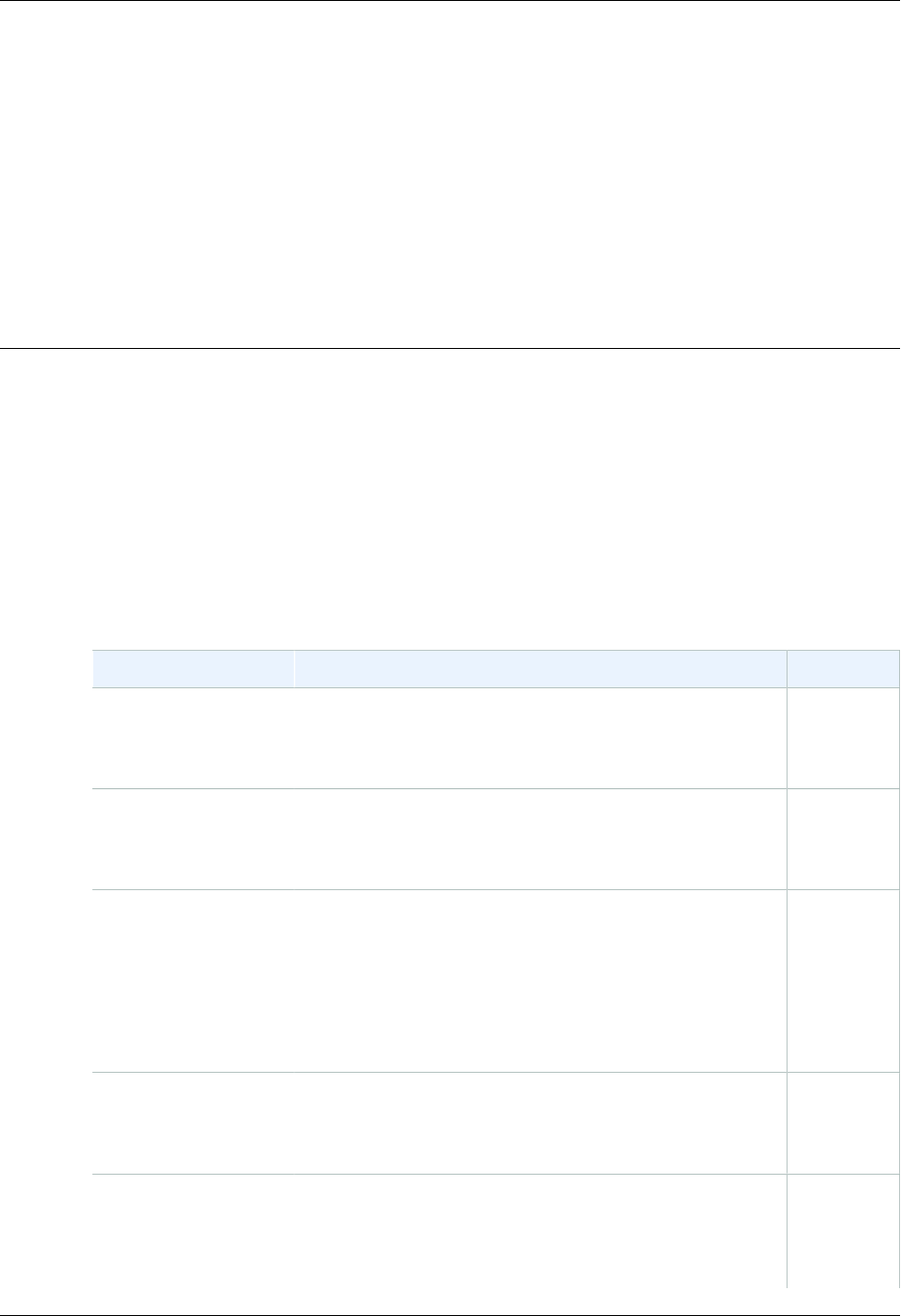

The following table describes the characteristics of eventually consistent read and consistent read.

Eventually Consistent Read Consistent Read

Stale reads possible No stale reads

Lowest read latency Potential higher read latency

Highest read throughput Potential lower read throughput

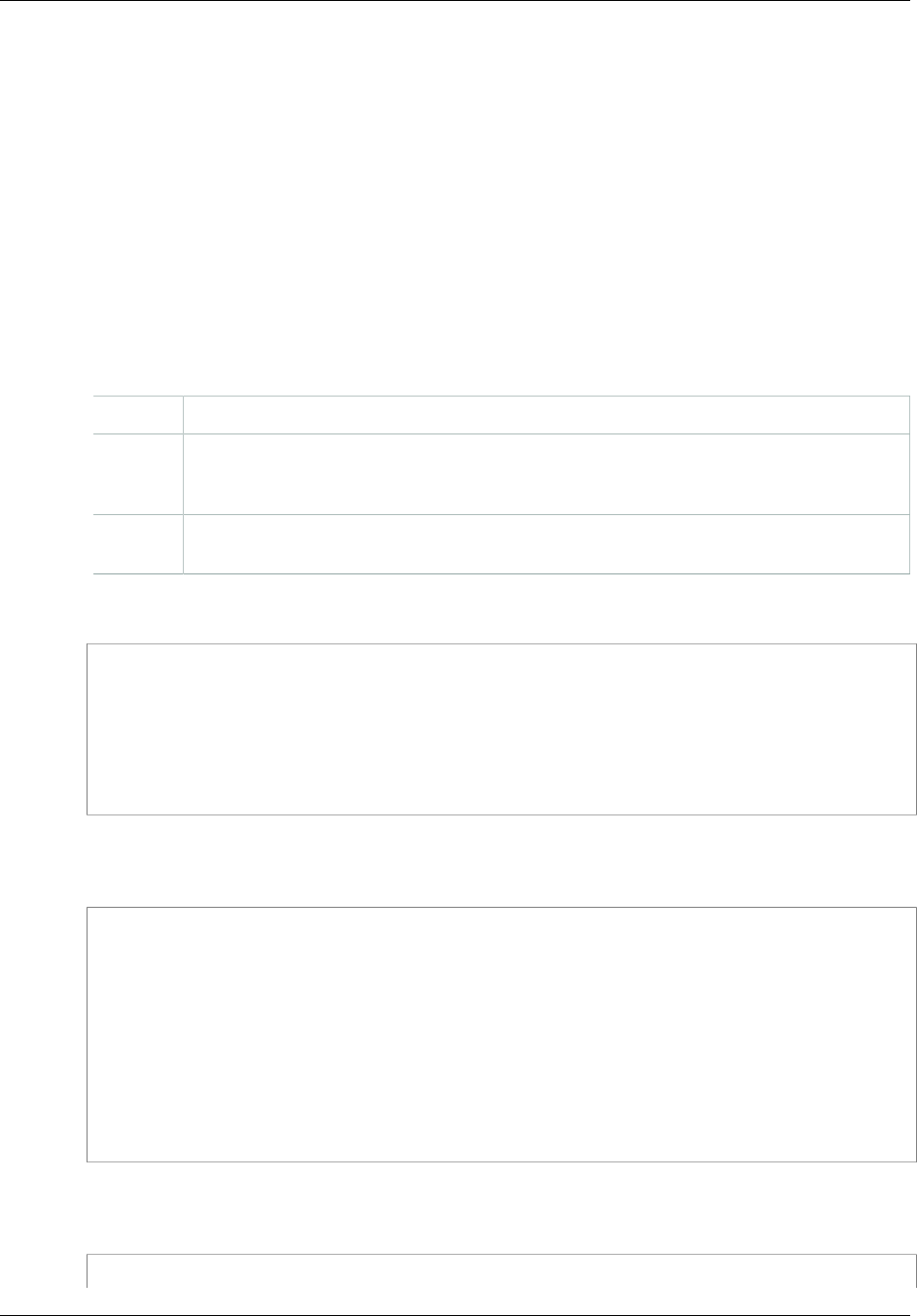

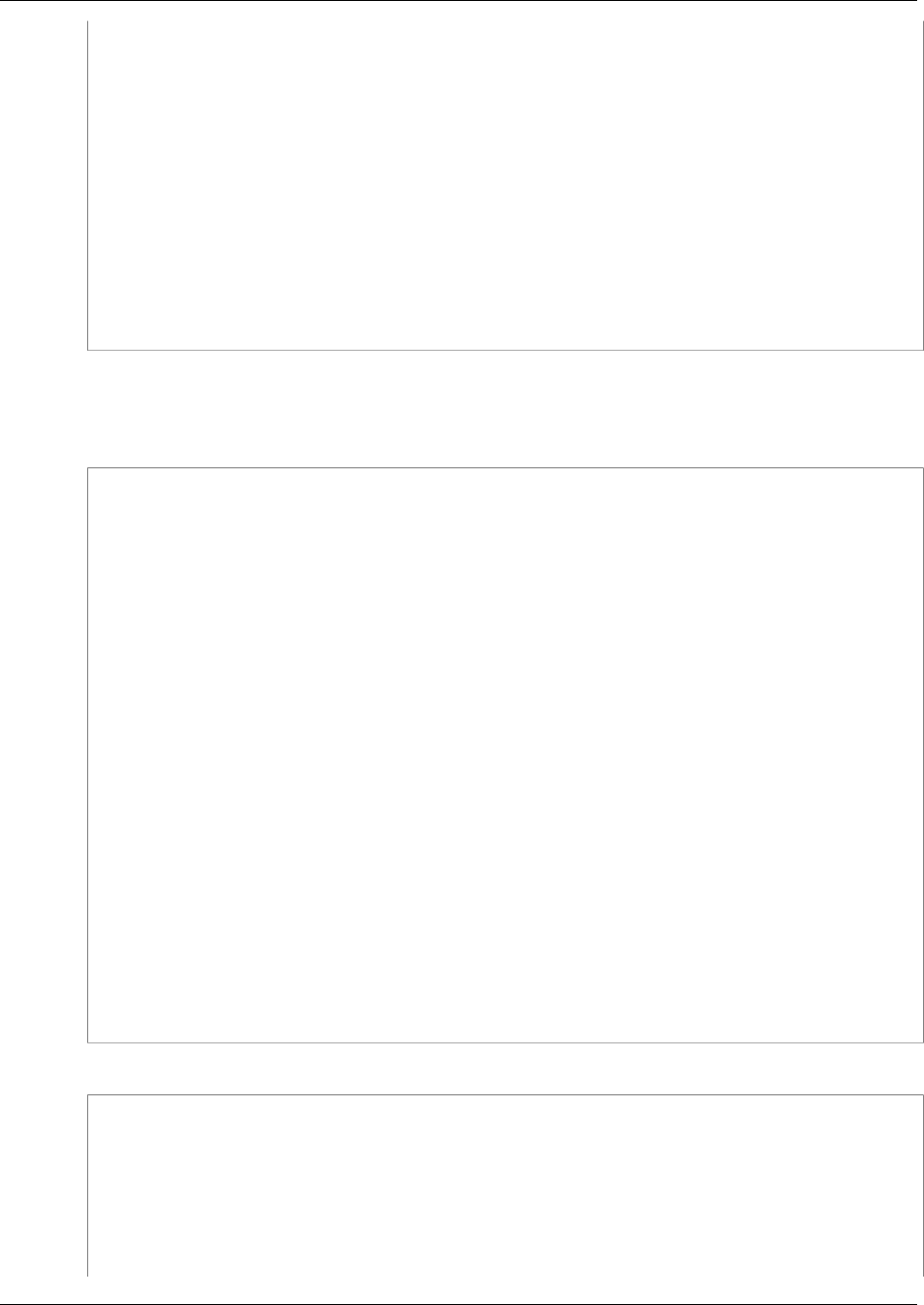

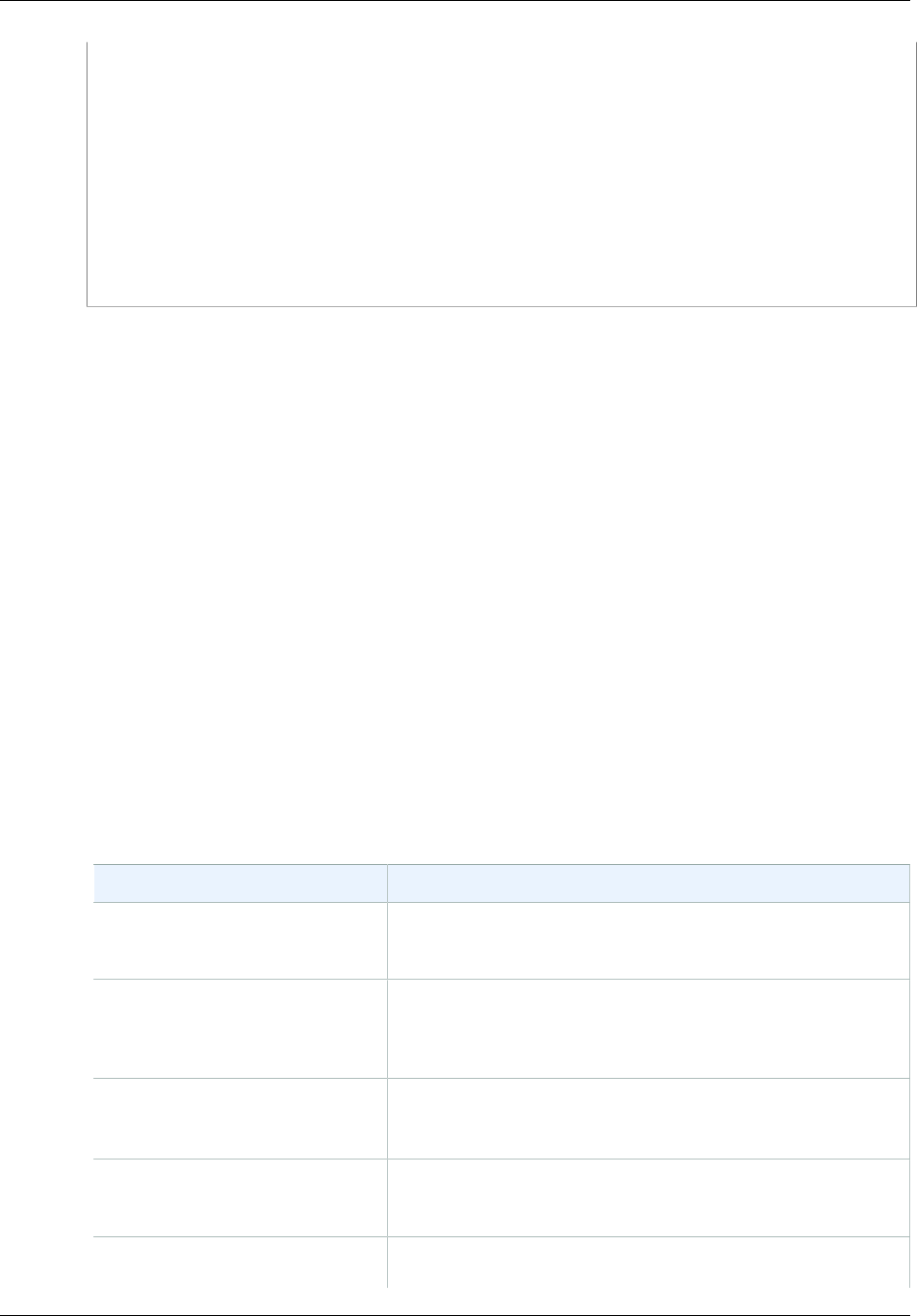

Concurrent Applications

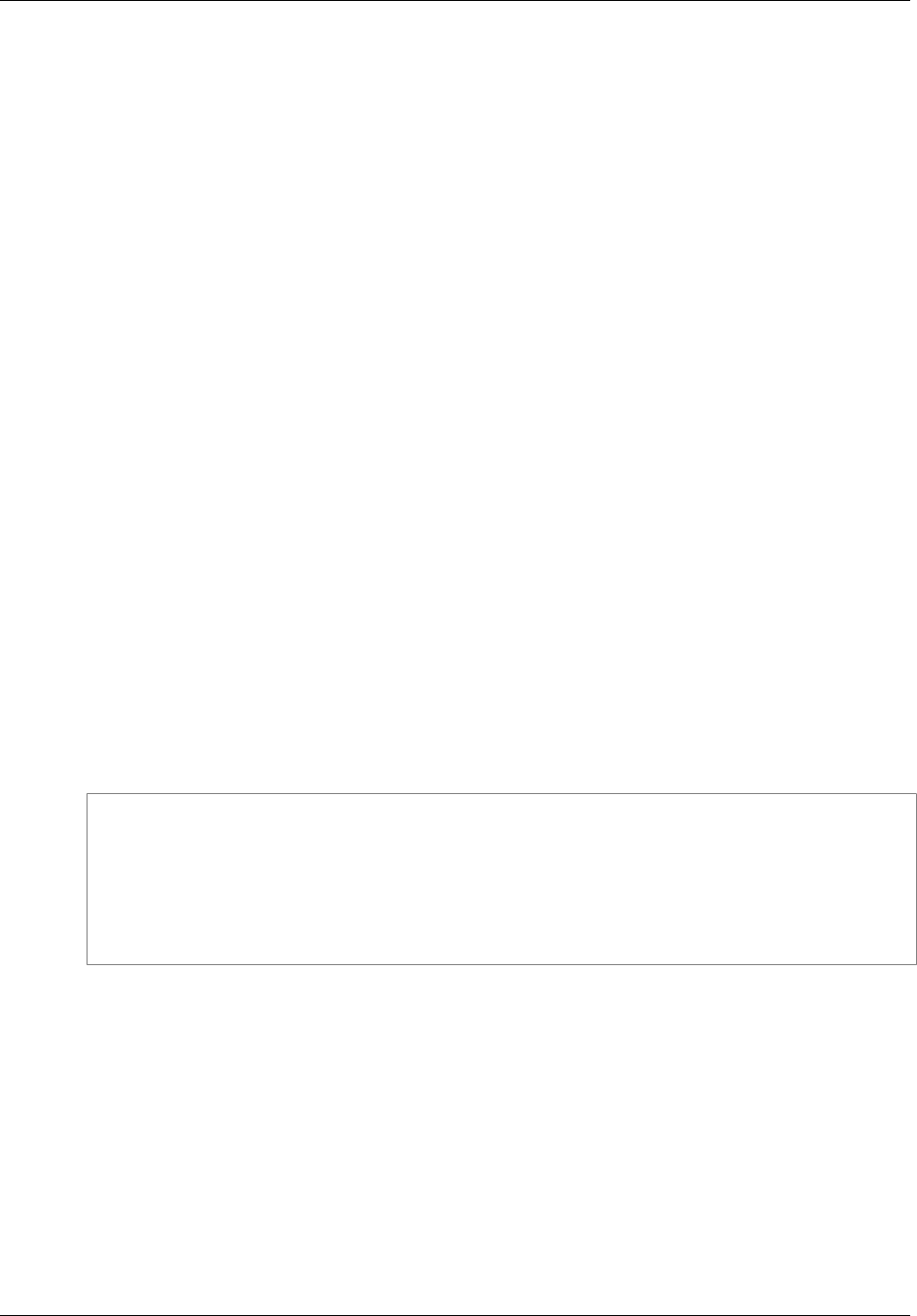

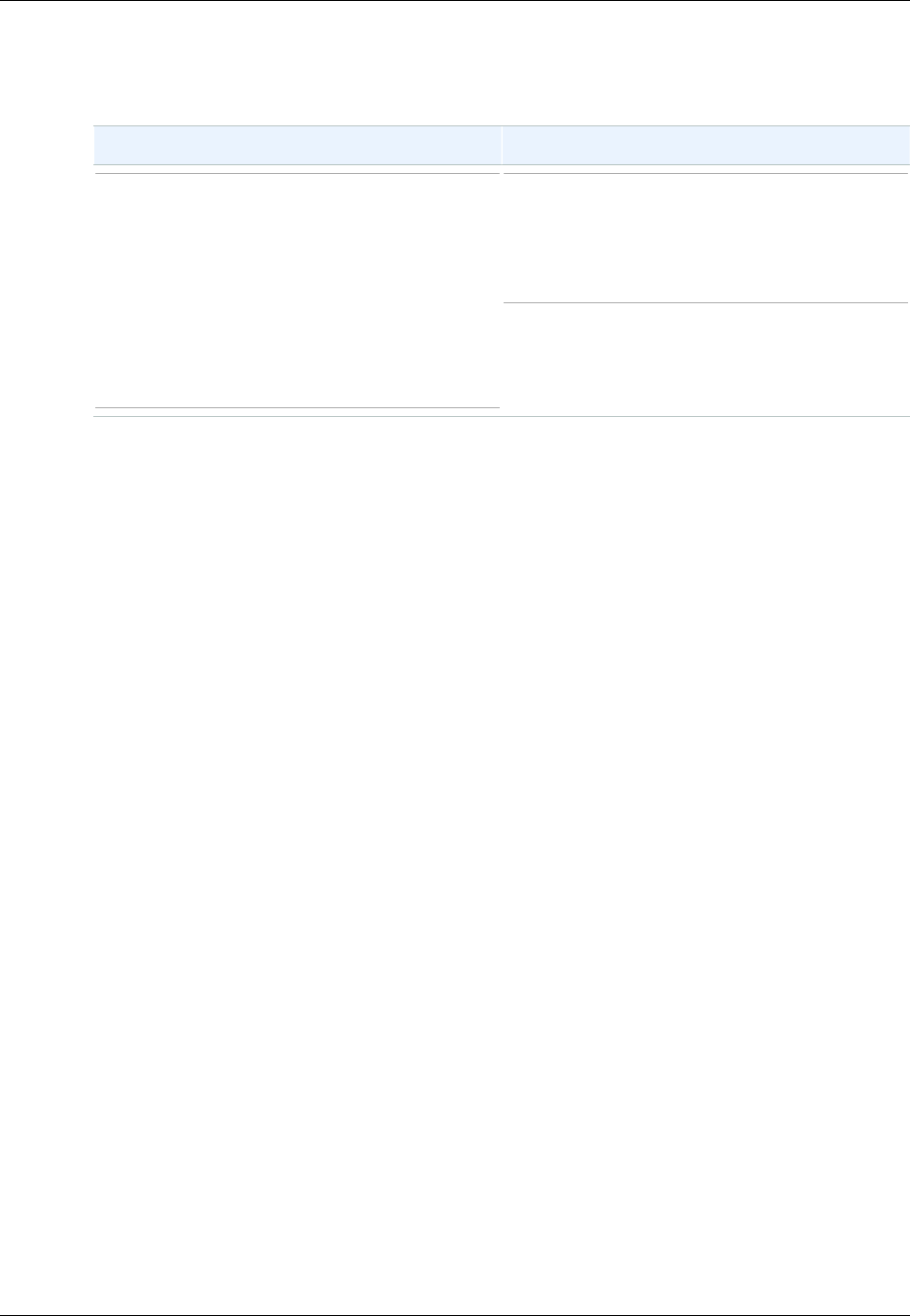

This section provides examples of eventually consistent and consistent read requests when multiple

clients are writing to the same items.

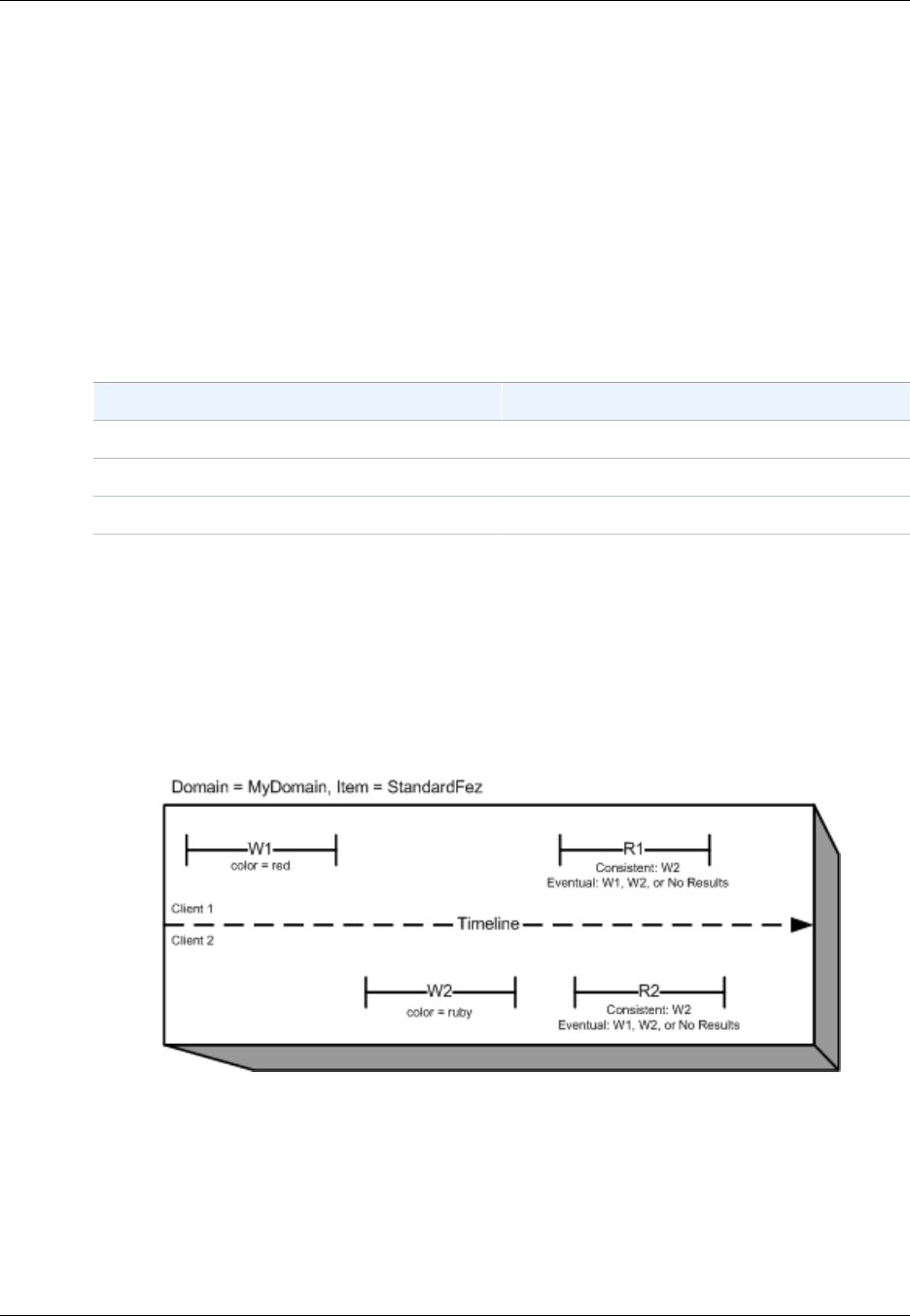

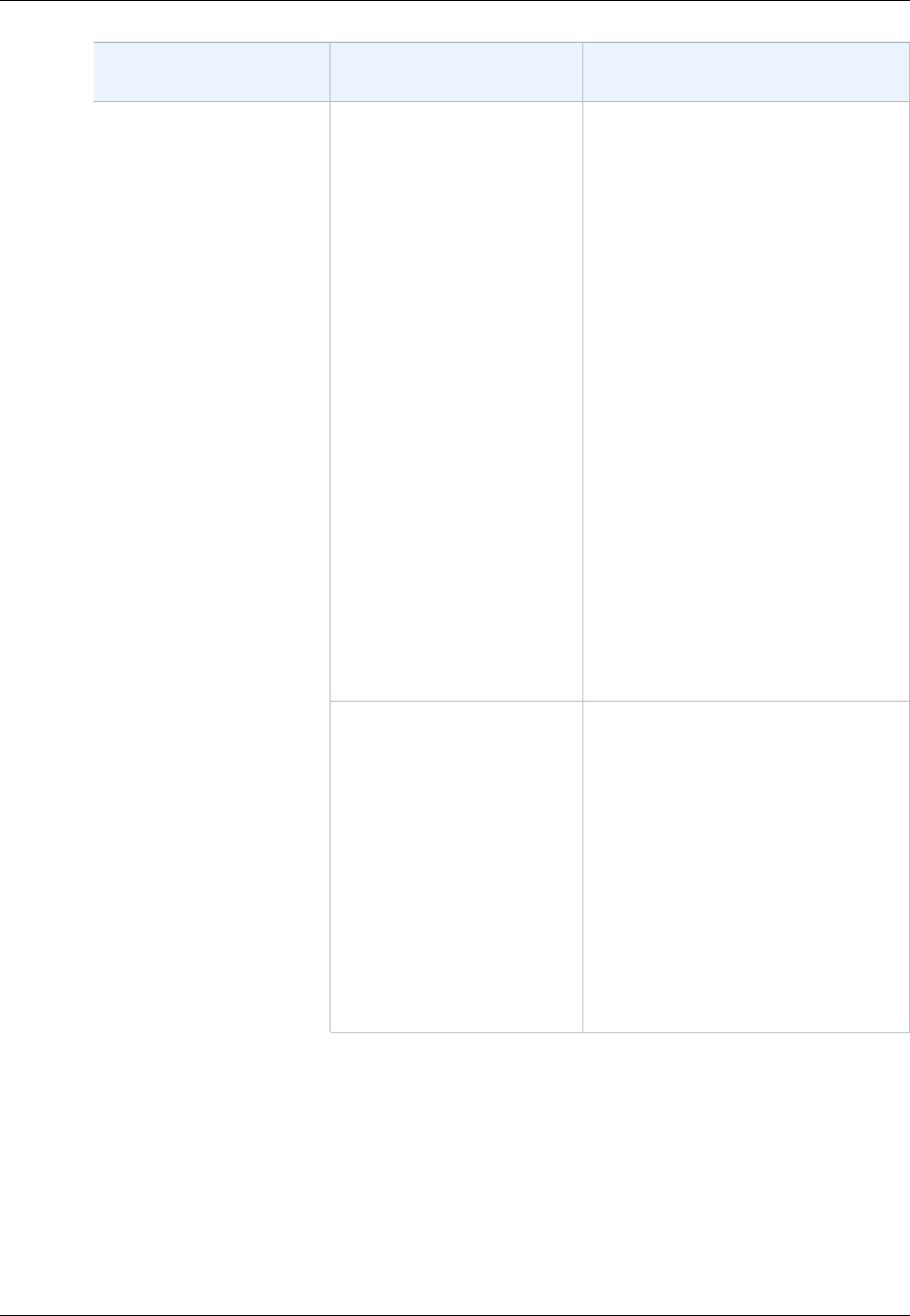

In this example, both W1 (write 1) and W2 (write 2) complete before the start of R1 (read 1) and R2

(read 2). For a consistent read, R1 and R2 both return color = ruby. For an eventually consistent

read, R1 and R2 might return color = red, color = ruby, or no results, depending on the amount

of time that has elapsed.

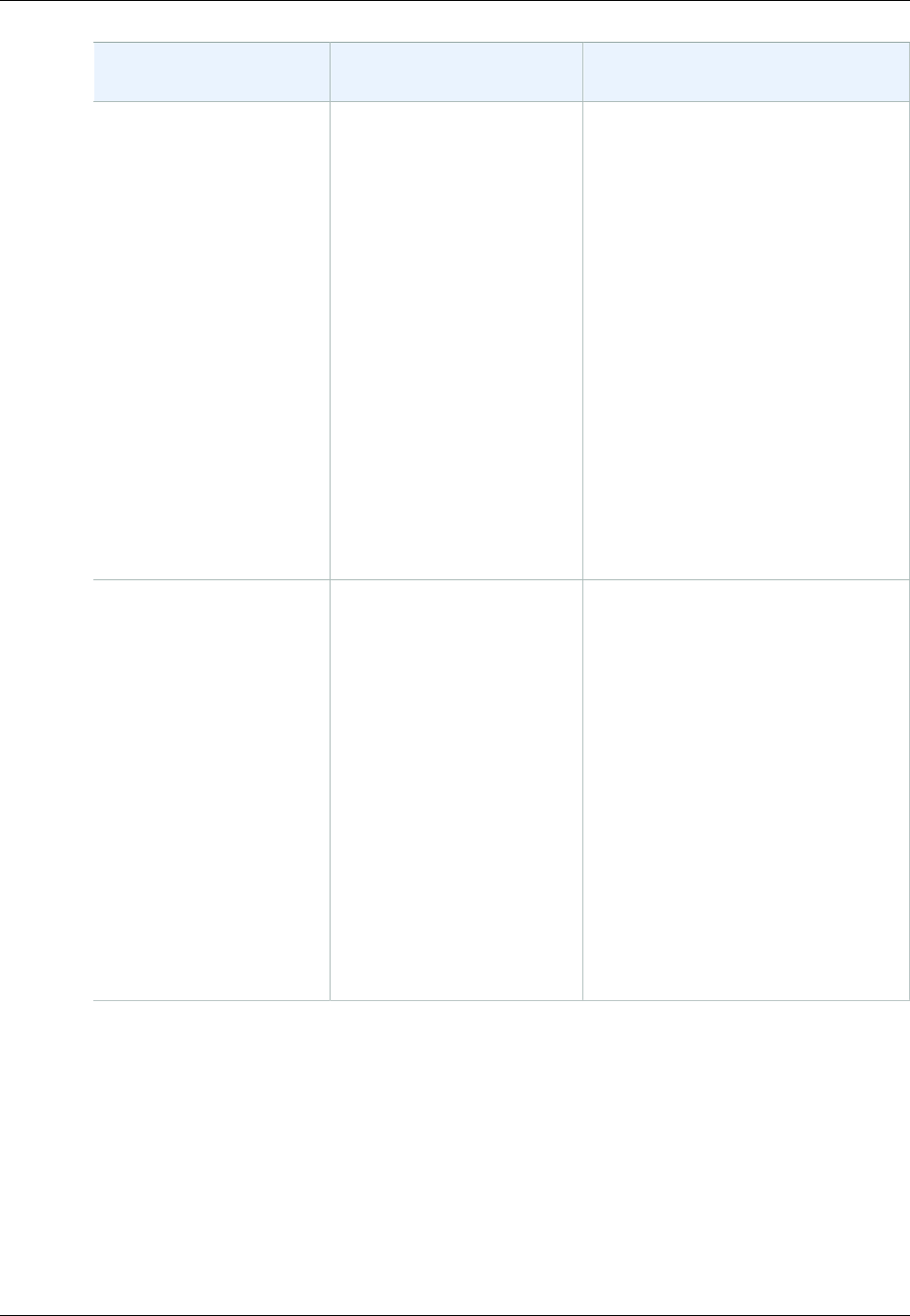

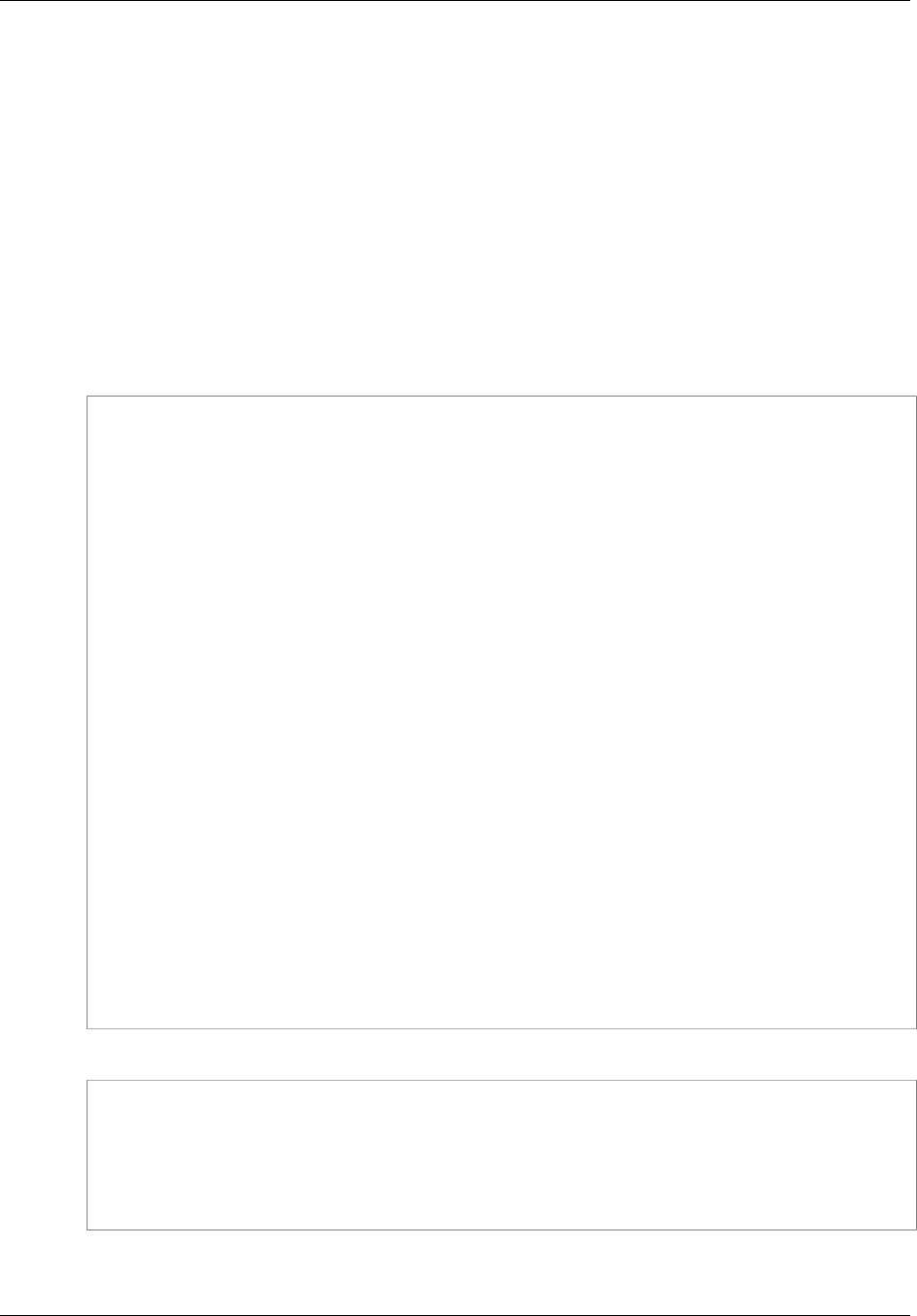

In the next example, W2 does not complete before the start of R1. Therefore, R1 might return color

= ruby or color = garnet for either a consistent read or an eventually consistent read. Also,

depending on the amount of time that has elapsed, an eventually consistent read might return no

results.

For a consistent read, R2 returns color = garnet. For an eventually consistent read, R2 might

return color = ruby, color = garnet, or no results depending on the amount of time that has

elapsed.

API Version 2006-03-01

5

Amazon Simple Storage Service Developer Guide

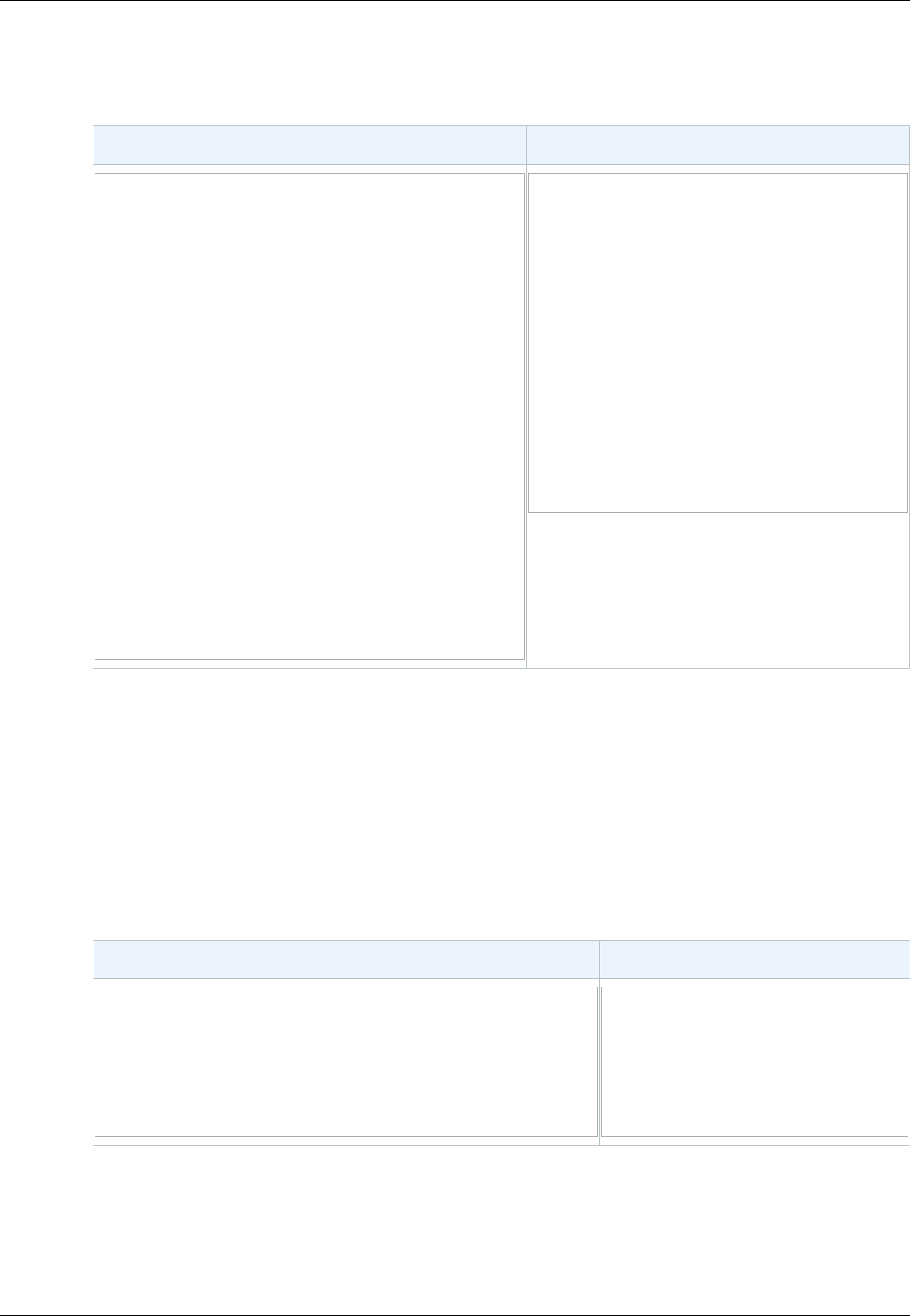

Features

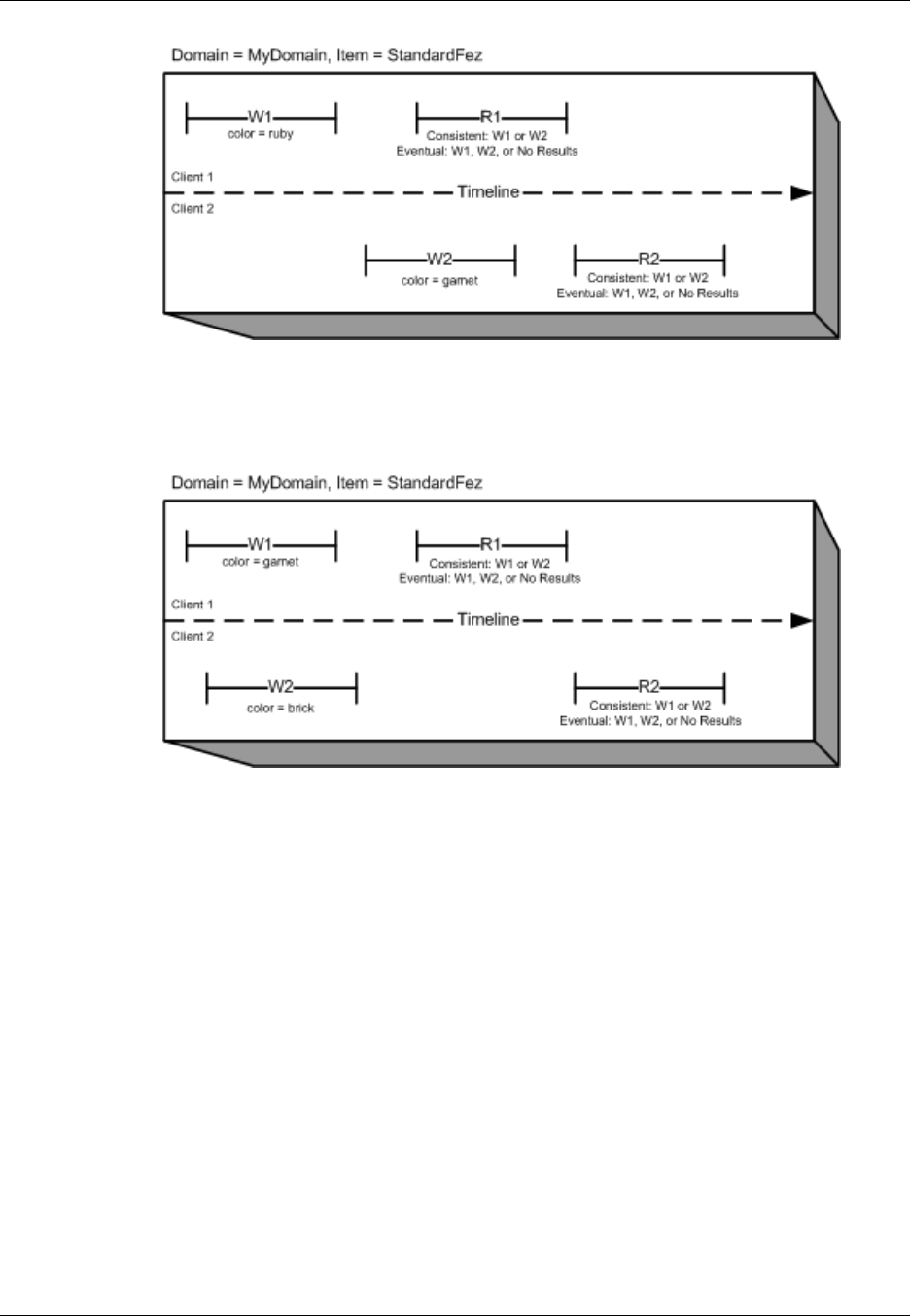

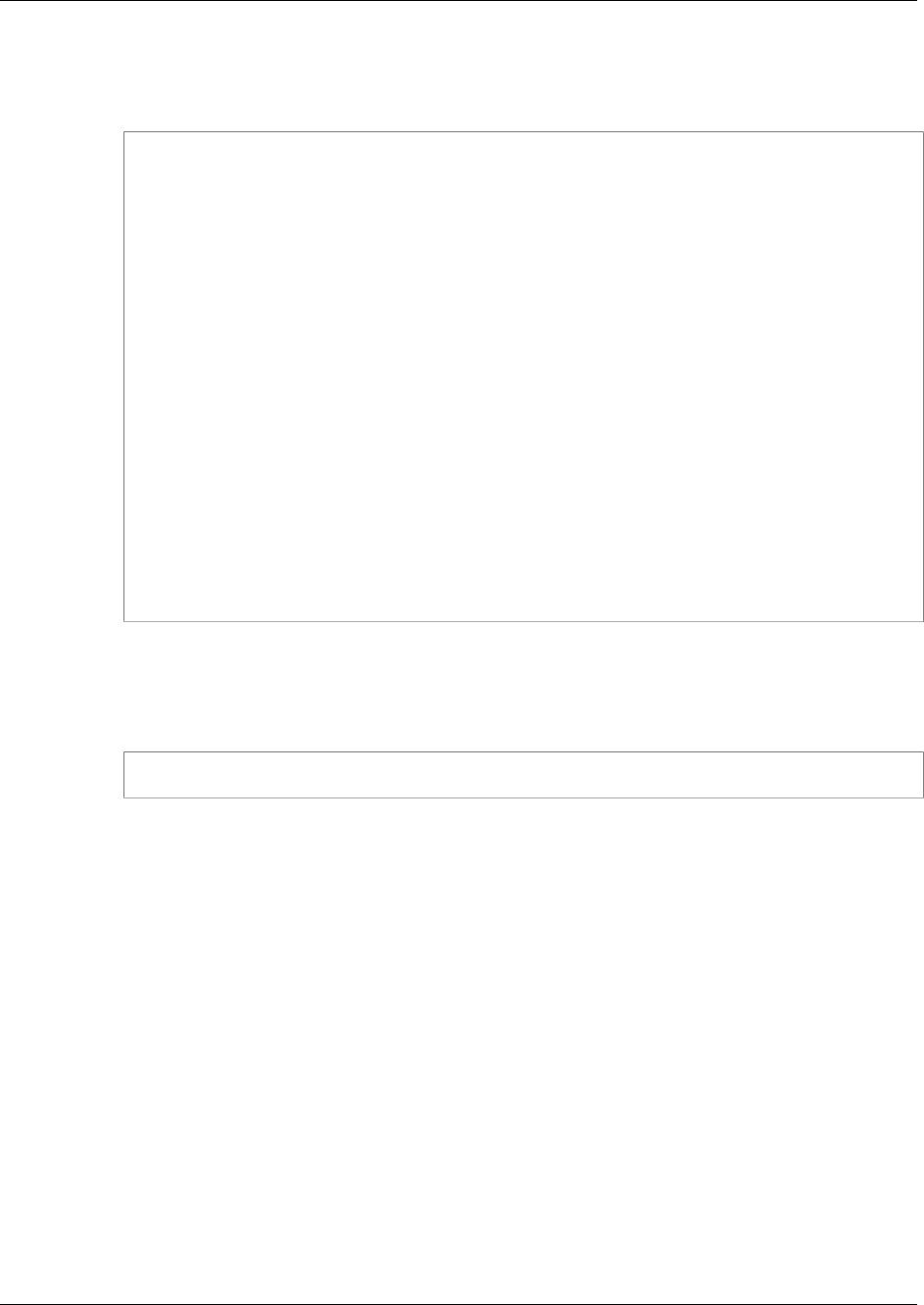

In the last example, Client 2 performs W2 before Amazon S3 returns a success for W1, so the

outcome of the final value is unknown (color = garnet or color = brick). Any subsequent reads

(consistent read or eventually consistent) might return either value. Also, depending on the amount of

time that has elapsed, an eventually consistent read might return no results.

Features

Topics

•Reduced Redundancy Storage (p. 6)

•Bucket Policies (p. 7)

•AWS Identity and Access Management (p. 8)

•Access Control Lists (p. 8)

•Versioning (p. 8)

•Operations (p. 8)

This section describes important Amazon S3 features.

Reduced Redundancy Storage

Customers can store their data using the Amazon S3 Reduced Redundancy Storage (RRS) option.

RRS enables customers to reduce their costs by storing non-critical, reproducible data at lower levels

of redundancy than Amazon S3 standard storage. RRS provides a cost-effective, highly available

API Version 2006-03-01

6

Amazon Simple Storage Service Developer Guide

Bucket Policies

solution for distributing or sharing content that is durably stored elsewhere, or for storing thumbnails,

transcoded media, or other processed data that can be easily reproduced. The RRS option stores

objects on multiple devices across multiple facilities, providing 400 times the durability of a typical disk

drive, but does not replicate objects as many times as standard Amazon S3 storage, and thus is even

more cost effective.

RRS provides 99.99% durability of objects over a given year. This durability level corresponds to an

average expected loss of 0.01% of objects annually.

AWS charges less for using RRS than for standard Amazon S3 storage. For pricing information, see

Amazon S3 Pricing.

For more information, see Storage Classes (p. 103).

Bucket Policies

Bucket policies provide centralized, access control to buckets and objects based on a variety of

conditions, including Amazon S3 operations, requesters, resources, and aspects of the request

(e.g., IP address). The policies are expressed in our access policy language and enable centralized

management of permissions. The permissions attached to a bucket apply to all of the objects in that

bucket.

Individuals as well as companies can use bucket policies. When companies register with Amazon S3

they create an account. Thereafter, the company becomes synonymous with the account. Accounts

are financially responsible for the Amazon resources they (and their employees) create. Accounts have

the power to grant bucket policy permissions and assign employees permissions based on a variety of

conditions. For example, an account could create a policy that gives a user write access:

• To a particular S3 bucket

• From an account's corporate network

• During business hours

• From an account's custom application (as identified by a user agent string)

An account can grant one application limited read and write access, but allow another to create and

delete buckets as well. An account could allow several field offices to store their daily reports in a

single bucket, allowing each office to write only to a certain set of names (e.g. "Nevada/*" or "Utah/*")

and only from the office's IP address range.

Unlike access control lists (described below), which can add (grant) permissions only on individual

objects, policies can either add or deny permissions across all (or a subset) of objects within a bucket.

With one request an account can set the permissions of any number of objects in a bucket. An account

can use wildcards (similar to regular expression operators) on Amazon resource names (ARNs) and

other values, so that an account can control access to groups of objects that begin with a common

prefix or end with a given extension such as .html.

Only the bucket owner is allowed to associate a policy with a bucket. Policies, written in the access

policy language, allow or deny requests based on:

• Amazon S3 bucket operations (such as PUT ?acl), and object operations (such as PUT Object,

or GET Object)

• Requester

• Conditions specified in the policy

An account can control access based on specific Amazon S3 operations, such as GetObject,

GetObjectVersion, DeleteObject, or DeleteBucket.

API Version 2006-03-01

7

Amazon Simple Storage Service Developer Guide

AWS Identity and Access Management

The conditions can be such things as IP addresses, IP address ranges in CIDR notation, dates, user

agents, HTTP referrer and transports (HTTP and HTTPS).

For more information, see Using Bucket Policies and User Policies (p. 308).

AWS Identity and Access Management

For example, you can use IAM with Amazon S3 to control the type of access a user or group of users

has to specific parts of an Amazon S3 bucket your AWS account owns.

For more information about IAM, see the following:

•Identity and Access Management (IAM)

•Getting Started

•IAM User Guide

Access Control Lists

For more information, see Managing Access with ACLs (p. 364)

Versioning

For more information, see Object Versioning (p. 106).

Operations

Following are the most common operations you'll execute through the API.

Common Operations

•Create a Bucket – Create and name your own bucket in which to store your objects.

•Write an Object – Store data by creating or overwriting an object. When you write an object, you

specify a unique key in the namespace of your bucket. This is also a good time to specify any access

control you want on the object.

•Read an Object – Read data back. You can download the data via HTTP or BitTorrent.

•Deleting an Object – Delete some of your data.

•Listing Keys – List the keys contained in one of your buckets. You can filter the key list based on a

prefix.

Details on this and all other functionality are described in detail later in this guide.

Amazon S3 Application Programming

Interfaces (API)

The Amazon S3 architecture is designed to be programming language-neutral, using our supported

interfaces to store and retrieve objects.

Amazon S3 provides a REST and a SOAP interface. They are similar, but there are some differences.

For example, in the REST interface, metadata is returned in HTTP headers. Because we only support

API Version 2006-03-01

8

Amazon Simple Storage Service Developer Guide

The REST Interface

HTTP requests of up to 4 KB (not including the body), the amount of metadata you can supply is

restricted.

Note

SOAP support over HTTP is deprecated, but it is still available over HTTPS. New Amazon S3

features will not be supported for SOAP. We recommend that you use either the REST API or

the AWS SDKs.

The REST Interface

The REST API is an HTTP interface to Amazon S3. Using REST, you use standard HTTP requests to

create, fetch, and delete buckets and objects.

You can use any toolkit that supports HTTP to use the REST API. You can even use a browser to fetch

objects, as long as they are anonymously readable.

The REST API uses the standard HTTP headers and status codes, so that standard browsers and

toolkits work as expected. In some areas, we have added functionality to HTTP (for example, we

added headers to support access control). In these cases, we have done our best to add the new

functionality in a way that matched the style of standard HTTP usage.

The SOAP Interface

Note

SOAP support over HTTP is deprecated, but it is still available over HTTPS. New Amazon S3

features will not be supported for SOAP. We recommend that you use either the REST API or

the AWS SDKs.

The SOAP API provides a SOAP 1.1 interface using document literal encoding. The most common

way to use SOAP is to download the WSDL (go to http://doc.s3.amazonaws.com/2006-03-01/

AmazonS3.wsdl), use a SOAP toolkit such as Apache Axis or Microsoft .NET to create bindings, and

then write code that uses the bindings to call Amazon S3.

Paying for Amazon S3

Pricing for Amazon S3 is designed so that you don't have to plan for the storage requirements of your

application. Most storage providers force you to purchase a predetermined amount of storage and

network transfer capacity: If you exceed that capacity, your service is shut off or you are charged high

overage fees. If you do not exceed that capacity, you pay as though you used it all.

Amazon S3 charges you only for what you actually use, with no hidden fees and no overage charges.

This gives developers a variable-cost service that can grow with their business while enjoying the cost

advantages of Amazon's infrastructure.

Before storing anything in Amazon S3, you need to register with the service and provide a payment

instrument that will be charged at the end of each month. There are no set-up fees to begin using the

service. At the end of the month, your payment instrument is automatically charged for that month's

usage.

For information about paying for Amazon S3 storage, see Amazon S3 Pricing.

Related Services

Once you load your data into Amazon S3, you can use it with other services that we provide. The

following services are the ones you might use most frequently:

API Version 2006-03-01

9

Amazon Simple Storage Service Developer Guide

Related Services

•Amazon Elastic Compute Cloud – This web service provides virtual compute resources in the

cloud. For more information, go to the Amazon EC2 product details page.

•Amazon EMR – This web service enables businesses, researchers, data analysts, and developers

to easily and cost-effectively process vast amounts of data. It utilizes a hosted Hadoop framework

running on the web-scale infrastructure of Amazon EC2 and Amazon S3. For more information, go to

the Amazon EMR product details page.

•AWS Import/Export – AWS Import/Export enables you to mail a storage device, such as a

RAID drive, to Amazon so that we can upload your (terabytes) of data into Amazon S3. For more

information, go to the AWS Import/Export Developer Guide.

API Version 2006-03-01

10

Amazon Simple Storage Service Developer Guide

About Access Keys

Making Requests

Topics

•About Access Keys (p. 11)

•Request Endpoints (p. 13)

•Making Requests to Amazon S3 over IPv6 (p. 13)

•Making Requests Using the AWS SDKs (p. 19)

•Making Requests Using the REST API (p. 49)

Amazon S3 is a REST service. You can send requests to Amazon S3 using the REST API or the AWS

SDK (see Sample Code and Libraries) wrapper libraries that wrap the underlying Amazon S3 REST

API, simplifying your programming tasks.

Every interaction with Amazon S3 is either authenticated or anonymous. Authentication is a process

of verifying the identity of the requester trying to access an Amazon Web Services (AWS) product.

Authenticated requests must include a signature value that authenticates the request sender. The

signature value is, in part, generated from the requester's AWS access keys (access key ID and secret

access key). For more information about getting access keys, see How Do I Get Security Credentials?

in the AWS General Reference.

If you are using the AWS SDK, the libraries compute the signature from the keys you provide.

However, if you make direct REST API calls in your application, you must write the code to compute

the signature and add it to the request.

About Access Keys

The following sections review the types of access keys that you can use to make authenticated

requests.

AWS Account Access Keys

The account access keys provide full access to the AWS resources owned by the account. The

following are examples of access keys:

• Access key ID (a 20-character, alphanumeric string). For example: AKIAIOSFODNN7EXAMPLE

• Secret access key (a 40-character string). For example: wJalrXUtnFEMI/K7MDENG/

bPxRfiCYEXAMPLEKEY

API Version 2006-03-01

11

Amazon Simple Storage Service Developer Guide

IAM User Access Keys

The access key ID uniquely identifies an AWS account. You can use these access keys to send

authenticated requests to Amazon S3.

IAM User Access Keys

You can create one AWS account for your company; however, there may be several employees in

the organization who need access to your organization's AWS resources. Sharing your AWS account

access keys reduces security, and creating individual AWS accounts for each employee might not

be practical. Also, you cannot easily share resources such as buckets and objects because they are

owned by different accounts. To share resources, you must grant permissions, which is additional

work.

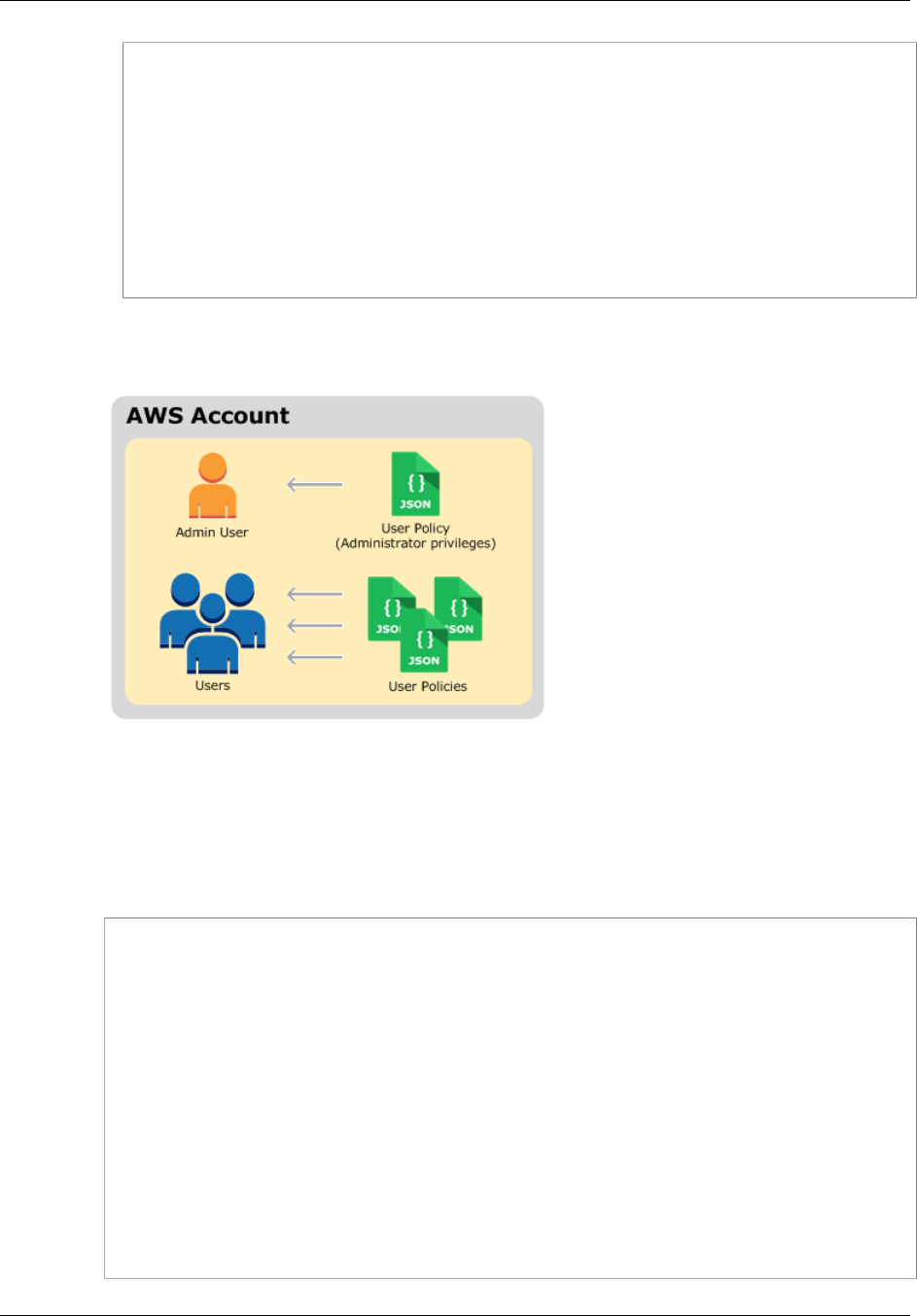

In such scenarios, you can use AWS Identity and Access Management (IAM) to create users under

your AWS account with their own access keys and attach IAM user policies granting appropriate

resource access permissions to them. To better manage these users, IAM enables you to create

groups of users and grant group-level permissions that apply to all users in that group.

These users are referred as IAM users that you create and manage within AWS. The parent account

controls a user's ability to access AWS. Any resources an IAM user creates are under the control of

and paid for by the parent AWS account. These IAM users can send authenticated requests to Amazon

S3 using their own security credentials. For more information about creating and managing users

under your AWS account, go to the AWS Identity and Access Management product details page.

Temporary Security Credentials

In addition to creating IAM users with their own access keys, IAM also enables you to grant temporary

security credentials (temporary access keys and a security token) to any IAM user to enable them

to access your AWS services and resources. You can also manage users in your system outside

AWS. These are referred as federated users. Additionally, users can be applications that you create to

access your AWS resources.

IAM provides the AWS Security Token Service API for you to request temporary security credentials.

You can use either the AWS STS API or the AWS SDK to request these credentials. The API returns

temporary security credentials (access key ID and secret access key), and a security token. These

credentials are valid only for the duration you specify when you request them. You use the access key

ID and secret key the same way you use them when sending requests using your AWS account or IAM

user access keys. In addition, you must include the token in each request you send to Amazon S3.

An IAM user can request these temporary security credentials for their own use or hand them out to

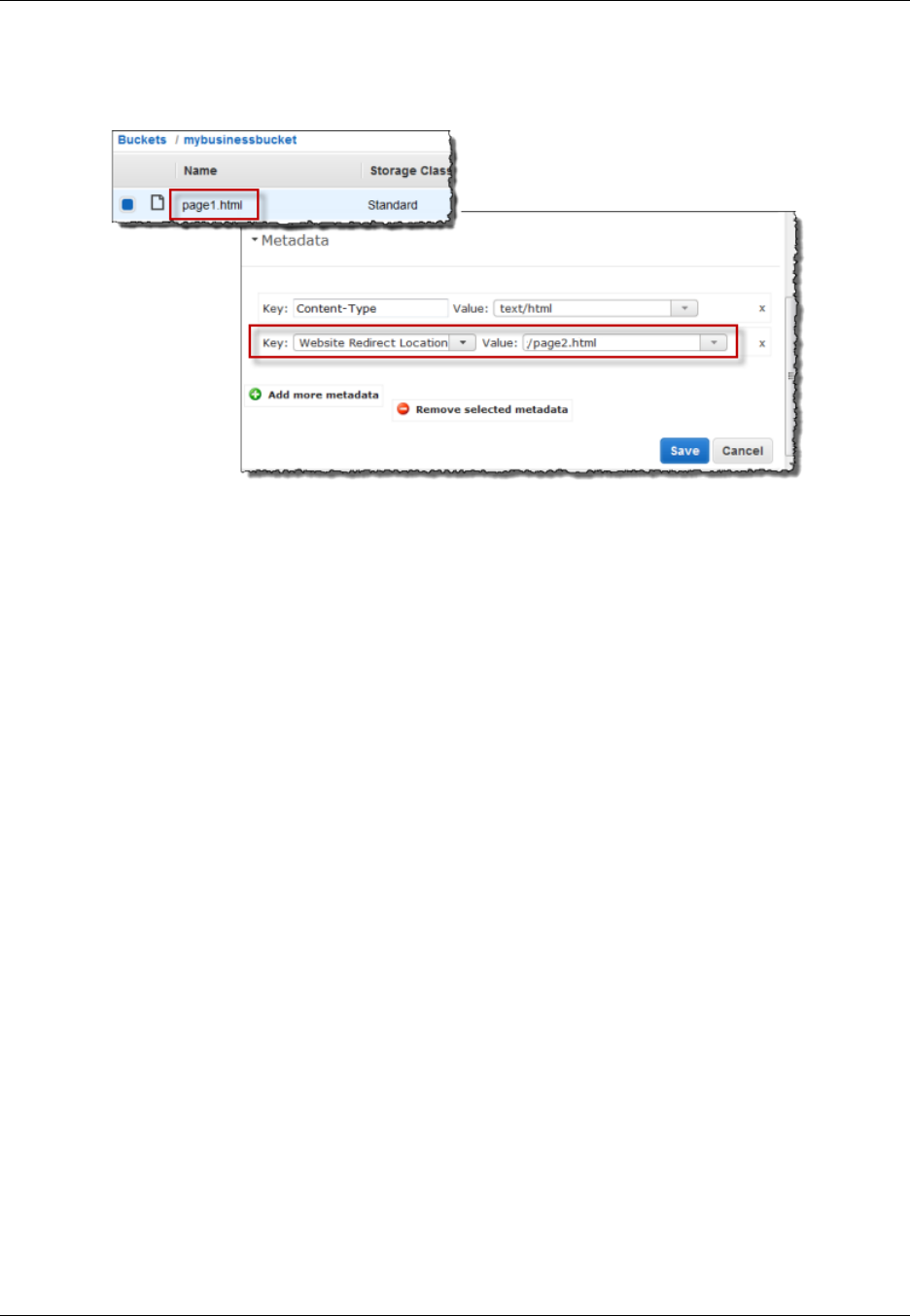

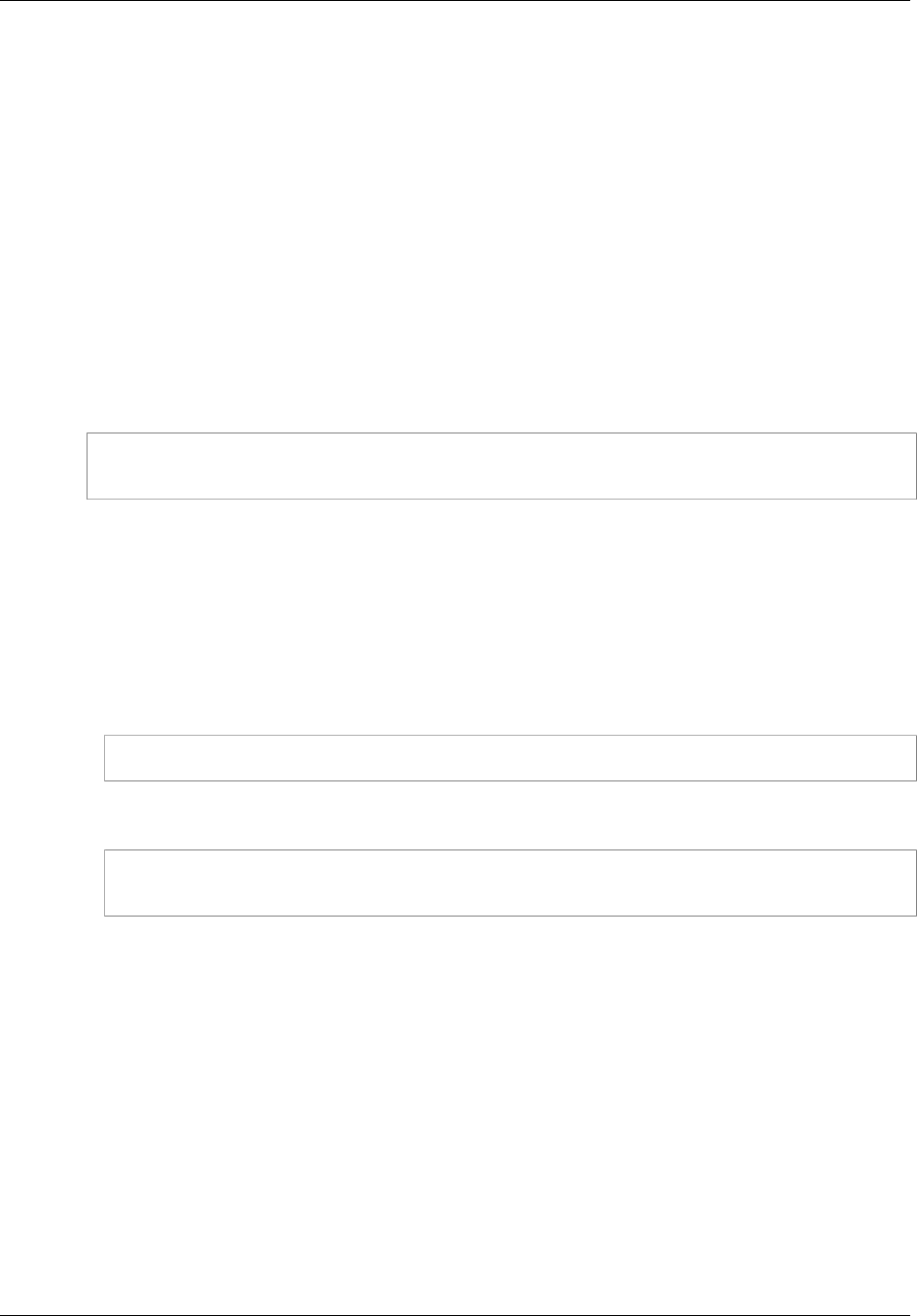

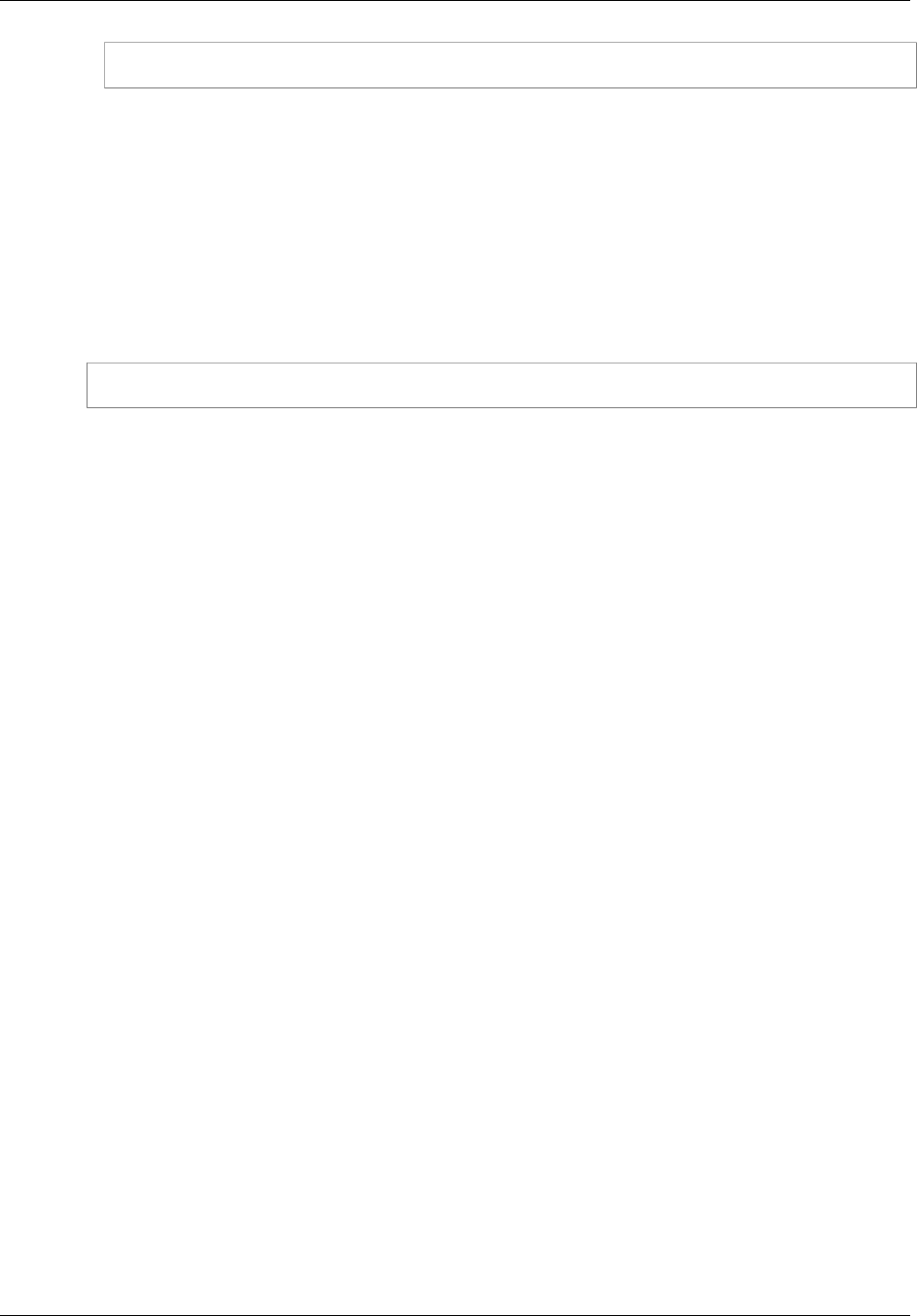

federated users or applications. When requesting temporary security credentials for federated users,