Android.NDK.Beginner.Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 436 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover

- Copyright

- Credits

- About the Author

- About the Reviewers

- www.PacktPub.com

- Table of Contents

- Preface

- Chapter 1: Setting Up your Environment

- Getting started with Android development

- Setting up Windows

- Time for action – preparing Windows for Android development

- Installing Android development kits on Windows

- Time for action – installing Android SDK and NDK on Windows

- Setting up Mac OS X

- Time for action – preparing Mac OS X for Android development

- Installing Android development kits on Mac OS X

- Time for action – installing Android SDK and NDK on Mac OS X

- Setting up Linux

- Time for action – preparing Ubuntu Linux for Android development

- Installing Android development kits on Linux

- Time for action – installing Android SDK and NDK on Ubuntu

- Setting up the Eclipse development environment

- Time for action – installing Eclipse

- Emulating Android

- Time for action – creating an Android virtual device

- Developing with an Android device on Windows and Mac OS X

- Time for action – setting up your Android device on Windows and Mac OS X

- Developing with an Android device on Linux

- Time for action – setting up your Android device on Ubuntu

- Troubleshooting a development device

- Summary

- Chapter 2: Creating, Compiling, and

Deploying Native Projects

- Compiling and deploying NDK sample applications

- Time for action – compiling and deploying the hellojni sample

- Exploring Android SDK tools

- Creating your first Android project using eclipse

- Time for action – initiating a Java project

- Interfacing Java with C/C++

- Time for action – calling C code from Java

- Compiling native code from Eclipse

- Time for action – creating a hybrid Java/C/C++ project

- Summary

- Chapter 3: Interfacing Java and C/C++ with JNI

- Working with Java primitives

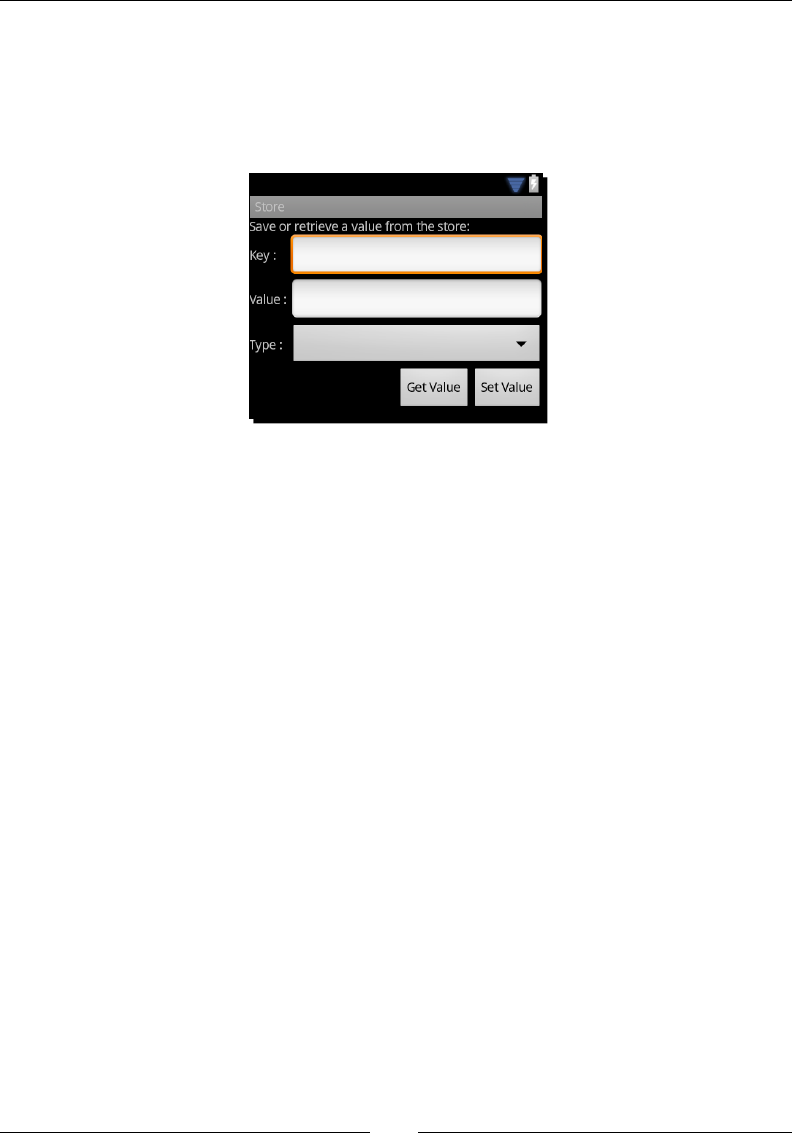

- Time for action – building a native key/value store

- Referencing Java objects from native code

- Time for action – saving a reference to an object in the Store

- Throwing exceptions from native code

- Time for action – raising exceptions from the Store

- Handling Java arrays

- Time for action – saving a reference to an object in the Store

- Summary

- Chapter 4: Calling Java Back from Native Code

- Chapter 5: Writing a Fully-native Application

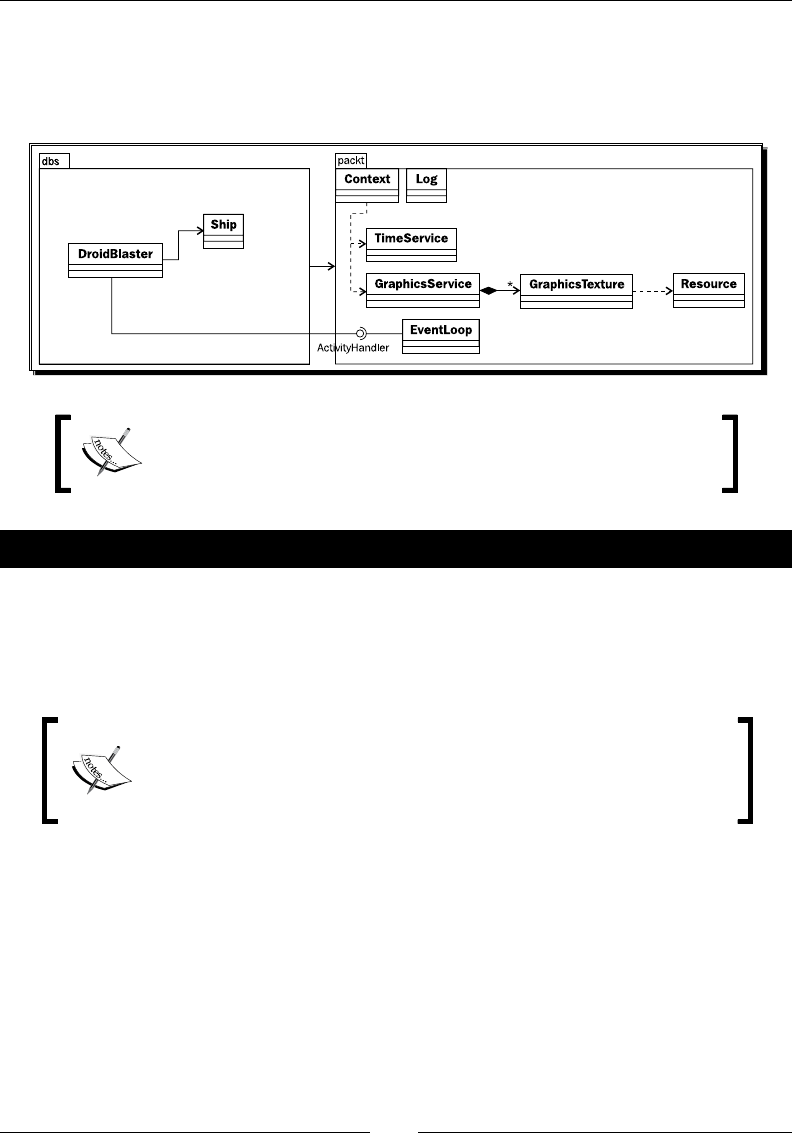

- Chapter 6: Rendering Graphics with OpenGL ES

- Initializing OpenGL ES

- Time for action – initializing OpenGL ES

- Reading PNG textures with the asset manager

- Time for action – loading a texture in OpenGL ES

- Drawing a sprite

- Time for action – drawing a Ship sprite

- Rendering a tile map with vertex buffer objects

- Time for action – drawing a tile-based background

- Summary

- Chapter 7: Playing Sound with OpenSL ES

- Chapter 8: Handling Input Devices and Sensors

- Chapter 9: Porting Existing Libraries to Android

- Chapter 10: Towards Professional Gaming

- Chapter 11: Debugging and Troubleshooting

- Index

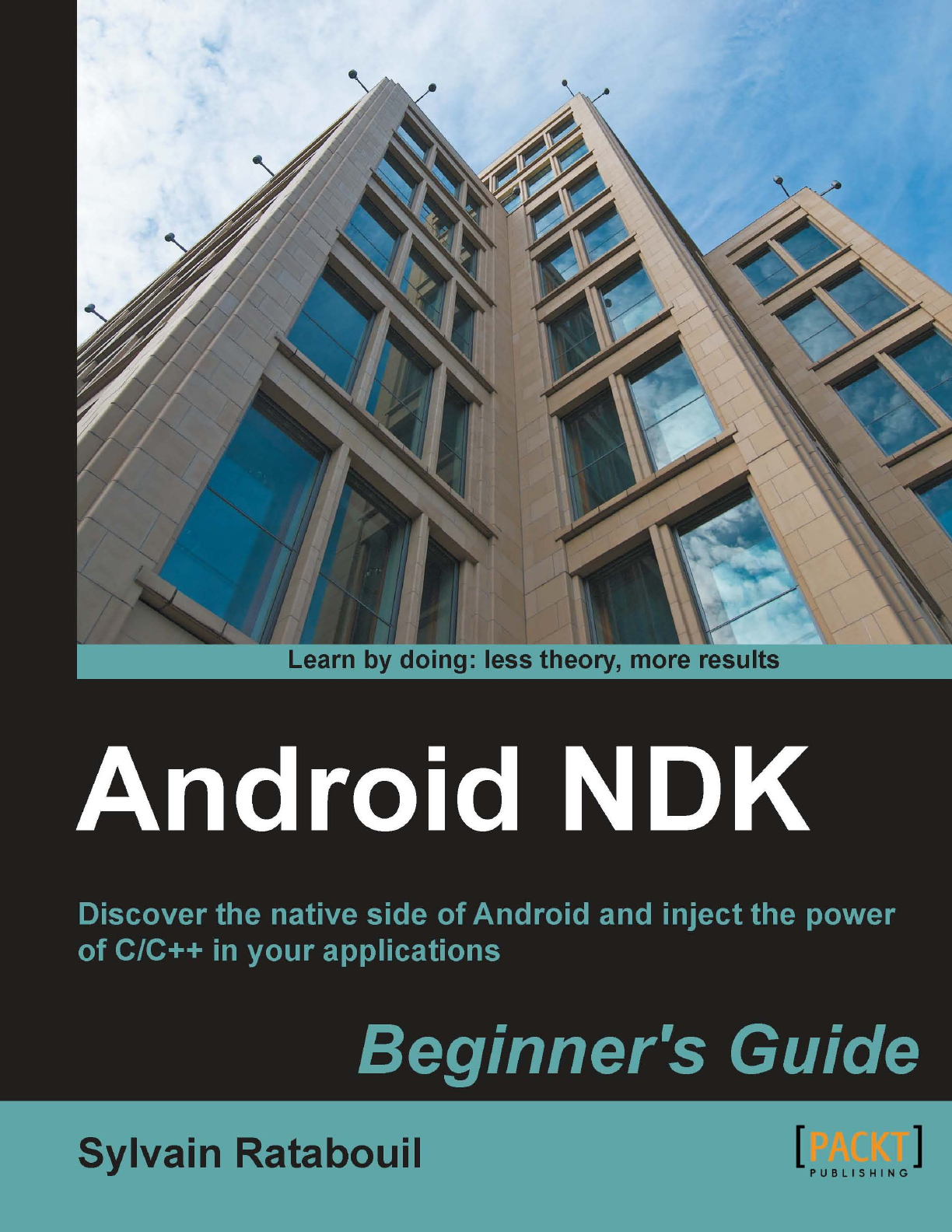

Android NDK

Beginner's Guide

Discover the nave side of Android and inject the power

of C/C++ in your applicaons

Sylvain Ratabouil

BIRMINGHAM - MUMBAI

Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >

Android NDK

Beginner's Guide

Copyright © 2012 Packt Publishing

All rights reserved. No part of this book may be reproduced, stored in a retrieval system,

or transmied in any form or by any means, without the prior wrien permission of the

publisher, except in the case of brief quotaons embedded in crical arcles or reviews.

Every eort has been made in the preparaon of this book to ensure the accuracy of the

informaon presented. However, the informaon contained in this book is sold without

warranty, either express or implied. Neither the author nor Packt Publishing, and its dealers

and distributors will be held liable for any damages caused or alleged to be caused directly

or indirectly by this book.

Packt Publishing has endeavored to provide trademark informaon about all of the companies

and products menoned in this book by the appropriate use of capitals. However, Packt

Publishing cannot guarantee the accuracy of this informaon.

First published: January 2012

Producon Reference: 1200112

Published by Packt Publishing Ltd.

Livery Place

35 Livery Street

Birmingham B3 2PB, UK.

ISBN 978-1-84969-152-9

www.packtpub.com

Cover Image by Marcus Grandon (marcusgrandon@mac.com)

Credits

Author

Sylvain Ratabouil

Reviewers

Marko Gargenta

Dr. Frank Grützmacher

Robert Mitchell

Acquision Editor

Sarah Cullington

Lead Technical Editor

Dayan Hyames

Technical Editor

Pramila Balan

Copy Editor

Laxmi Subramanian

Project Coordinator

Jovita Pinto

Proofreader

Lynda Sliwoski

Indexer

Hemangini Bari

Graphics

Valenna D'silva

Producon Coordinators

Prachali Bhiwandkar

Melwyn D'sa

Nilesh Mohite

Cover Work

Alwin Roy

About the Author

Sylvain Ratabouil is a conrmed IT consultant with experience in C++ and Java

technologies. He worked for the space industry and got involved in aeronauc projects at

Valtech Technologies where he now takes part in the Digital Revoluon.

Sylvain earned the master's degree in IT from Paul Sabaer University in Toulouse and did

M.Sc. in Computer Science from Liverpool University.

As a technology lover, he is passionate about mobile technologies and cannot live or sleep

without his Android smartphone.

I would like to thank Steven Wilding for oering me to write this book;

Sneha Harkut and Jovita Pinto for awaing me with so much paence;

Reshma Sundaresan, and Dayan Hyames for pung this book on the

right track; Sarah Cullington for helping me nalizing this book;

Dr. Frank Grützmacher, Marko Gargenta, and Robert Mitchell for

all their helpful comments.

About the Reviewers

Dr. Frank Grützmacher has worked for several major German rms in the area of large

distributed systems. He was an early user of dierent Corba implementaons in the past.

He got his Ph.D. in the eld of electrical engineering, but with the focus on distributed

heterogeneous systems. In 2010, he was involved in a project, which changed parts of the

Android plaorm for a manufacturer. From there, he got his knowledge about the android

NDK and nave processes on this plaorm.

He has already worked as a reviewer for another Android 3.0 book.

Robert Mitchell is an MIT graduate with over 40 years experience in Informaon

Technology and is semirered. He has developed soware for all the big iron companies:

IBM, Amdahl, Fujitsu, Naonal Semiconductor, and Storage Technology. Soware companies

include Veritas and Symantec. Recent languages that he knows are Ruby and Java, with a

long background in C++.

www.PacktPub.com

Support les, eBooks, discount offers and more

You might want to visit www.PacktPub.com for support les and downloads related to

your book.

Did you know that Packt oers eBook versions of every book published, with PDF and ePub

les available? You can upgrade to the eBook version at www.PacktPub.com and as a print

book customer, you are entled to a discount on the eBook copy. Get in touch with us at

service@packtpub.com for more details.

At www.PacktPub.com, you can also read a collecon of free technical arcles, sign up for

a range of free newsleers and receive exclusive discounts and oers on Packt books and

eBooks.

http://PacktLib.PacktPub.com

Do you need instant soluons to your IT quesons? PacktLib is Packt's online digital book

library. Here, you can access, read and search across Packt's enre library of books.

Why Subscribe?

Fully searchable across every book published by Packt

Copy and paste, print and bookmark content

On demand and accessible via web browser

Free Access for Packt account holders

If you have an account with Packt at www.PacktPub.com, you can use this to access

PacktLib today and view nine enrely free books. Simply use your login credenals for

immediate access.

Table of Contents

Preface 1

Chapter 1: Seng Up your Environment 7

Geng started with Android development 7

Seng up Windows 8

Time for acon – preparing Windows for Android development 8

Installing Android development kits on Windows 12

Time for acon – installing Android SDK and NDK on Windows 13

Seng up Mac OS X 18

Time for acon – preparing Mac OS X for Android development 18

Installing Android development kits on Mac OS X 20

Time for acon – installing Android SDK and NDK on Mac OS X 20

Seng up Linux 22

Time for acon – preparing Ubuntu Linux for Android development 22

Installing Android development kits on Linux 27

Time for acon – installing Android SDK and NDK on Ubuntu 27

Seng up the Eclipse development environment 29

Time for acon – installing Eclipse 29

Emulang Android 33

Time for acon – creang an Android virtual device 33

Developing with an Android device on Windows and Mac OS X 37

Time for acon – seng up your Android device on Windows and Mac OS X 37

Developing with an Android device on Linux 39

Time for acon – seng up your Android device on Ubuntu 39

Troubleshoong a development device 42

Summary 43

Chapter 2: Creang, Compiling, and Deploying Nave Projects 45

Compiling and deploying NDK sample applicaons 46

Time for acon – compiling and deploying the hellojni sample 46

Table of Contents

[ ii ]

Exploring Android SDK tools 51

Android debug bridge 51

Project conguraon tool 54

Creang your rst Android project using eclipse 56

Time for acon – iniang a Java project 56

Introducing Dalvik 59

Interfacing Java with C/C++ 60

Time for acon – calling C code from Java 60

More on Makeles 65

Compiling nave code from Eclipse 67

Time for acon – creang a hybrid Java/C/C++ project 67

Summary 72

Chapter 3: Interfacing Java and C/C++ with JNI 73

Working with Java primives 74

Time for acon – building a nave key/value store 75

Referencing Java objects from nave code 85

Time for acon – saving a reference to an object in the Store 85

Local and global JNI references 90

Throwing excepons from nave code 91

Time for acon – raising excepons from the Store 92

JNI in C++ 96

Handling Java arrays 96

Time for acon – saving a reference to an object in the Store 97

Checking JNI excepons 106

Summary 107

Chapter 4: Calling Java Back from Nave Code 109

Synchronizing Java and nave threads 110

Time for acon – running a background thread 111

Aaching and detaching threads 120

More on Java and nave code lifecycles 121

Calling Java back from nave code 122

Time for acon – invoking Java code from a nave thread 122

More on callbacks 133

JNI method denions 134

Processing bitmaps navely 135

Time for acon – decoding camera feed from nave code 136

Summary 146

Chapter 5: Wring a Fully-nave Applicaon 147

Creang a nave acvity 148

Time for acon – creang a basic nave acvity 148

Table of Contents

[ iii ]

Handling acvity events 155

Time for acon – handling acvity events 155

More on Nave App Glue 166

UI thread 167

Nave thread 168

Android_app structure 170

Accessing window and me navely 171

Time for acon – displaying raw graphics and implemenng a mer 172

More on me primives 181

Summary 181

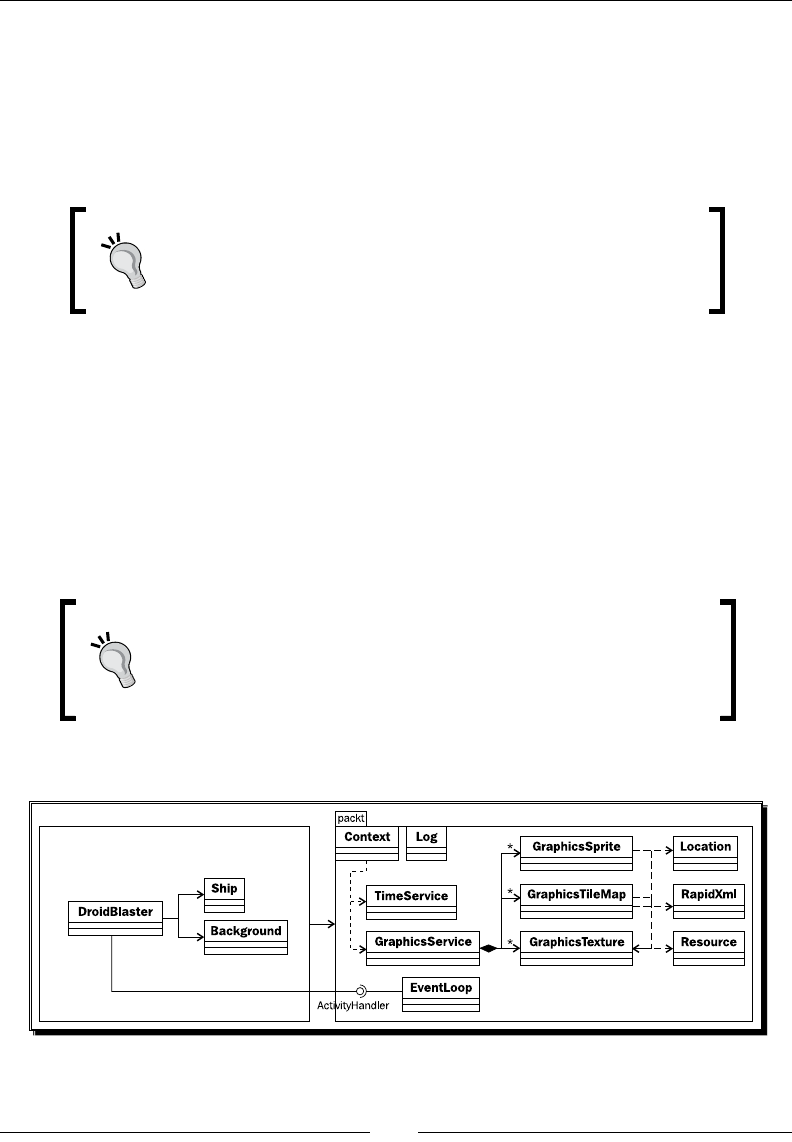

Chapter 6: Rendering Graphics with OpenGL ES 183

Inializing OpenGL ES 184

Time for acon – inializing OpenGL ES 184

Reading PNG textures with the asset manager 193

Time for acon – loading a texture in OpenGL ES 194

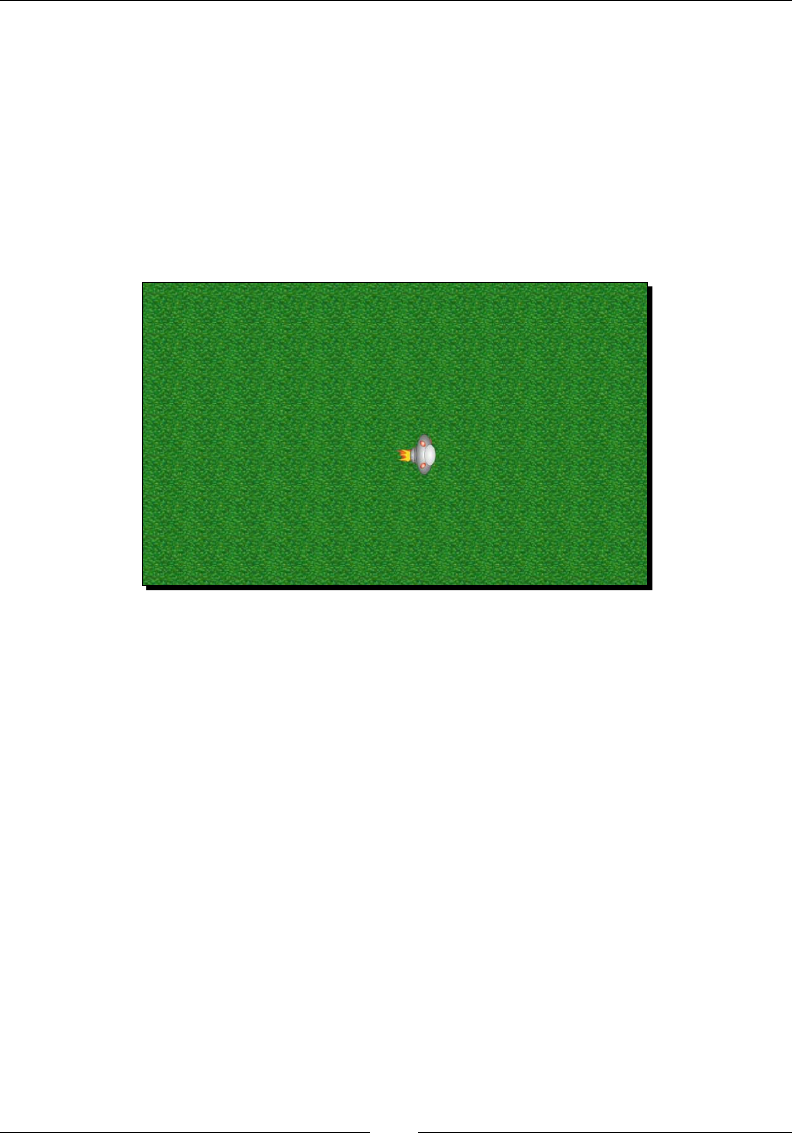

Drawing a sprite 208

Time for acon – drawing a Ship sprite 209

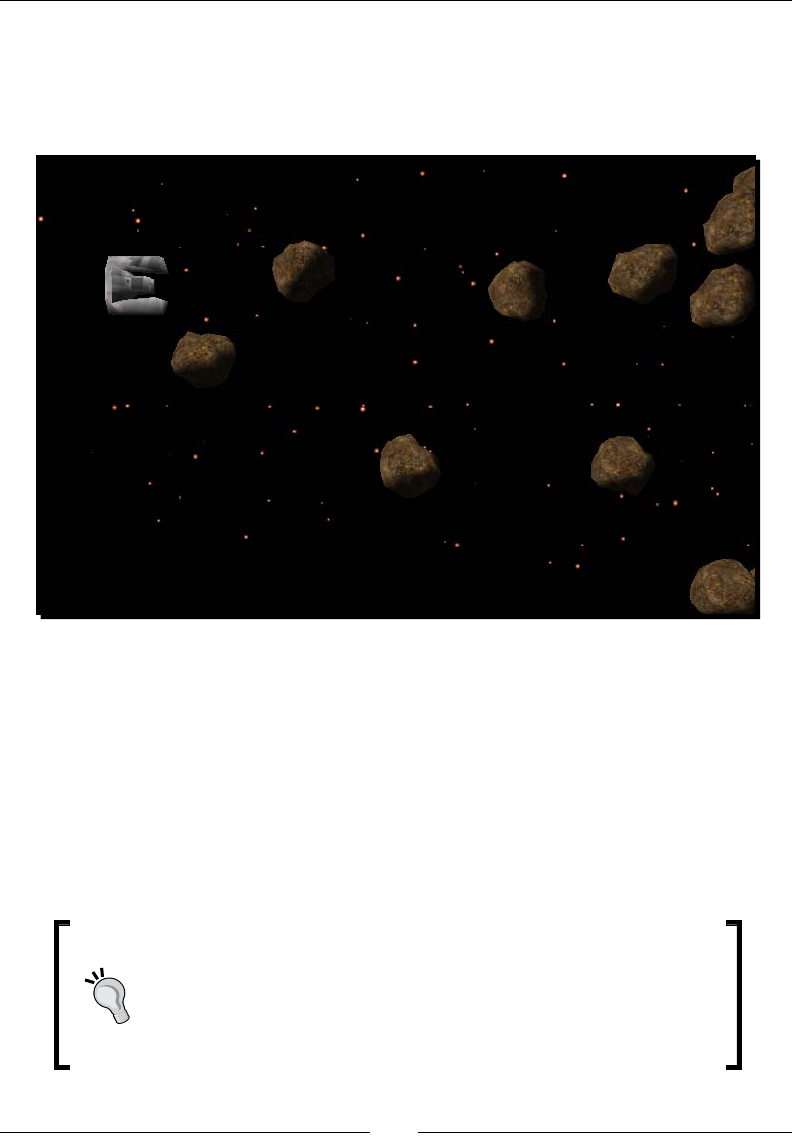

Rendering a le map with vertex buer objects 220

Time for acon – drawing a le-based background 221

Summary 238

Chapter 7: Playing Sound with OpenSL ES 239

Inializing OpenSL ES 241

Time for acon – creang OpenSL ES engine and output 241

More on OpenSL ES philosophy 248

Playing music les 249

Time for acon – playing background music 249

Playing sounds 256

Time for acon – creang and playing a sound buer queue 257

Event callback 266

Recording sounds 268

Summary 272

Chapter 8: Handling Input Devices and Sensors 273

Interacng with Android 274

Time for acon – handling touch events 276

Detecng keyboard, D-Pad, and Trackball events 288

Time for acon – handling keyboard, D-Pad, and trackball, navely 289

Probing device sensors 298

Time for acon – turning your device into a joypad 300

Summary 313

Table of Contents

[ iv ]

Chapter 9: Porng Exisng Libraries to Android 315

Developing with the Standard Template Library 316

Time for acon – embedding GNU STL in DroidBlaster 316

Stac versus shared 326

STL performances 327

Compiling Boost on Android 328

Time for acon – embedding Boost in DroidBlaster 328

Porng third-party libraries to Android 338

Time for acon – compiling Box2D and Irrlicht with the NDK 339

GCC opmizaon levels 346

Mastering Makeles 346

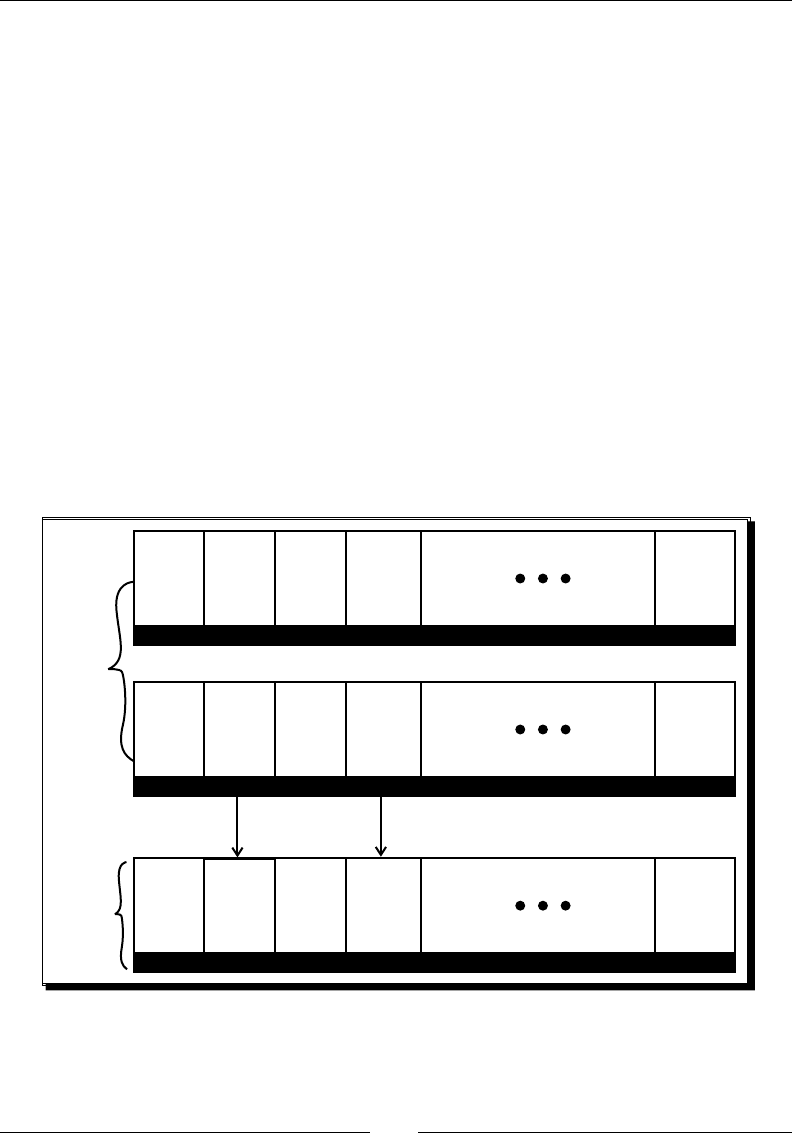

Makele variables 347

Makele Instrucons 348

Summary 351

Chapter 10: Towards Professional Gaming 353

Simulang physics with Box2D 353

Time for acon – simulang physics with Box2D 354

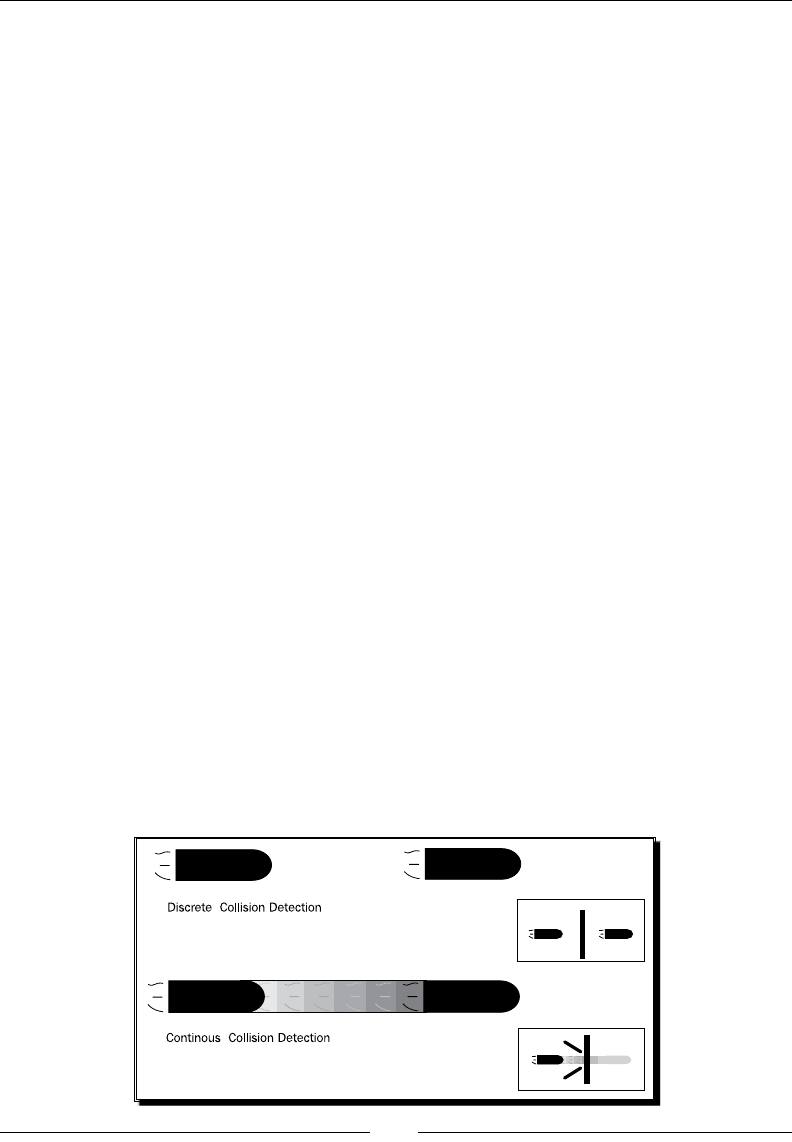

More on collision detecon 366

Collision modes 367

Collision ltering 368

More resources about Box2D 369

Running a 3D engine on Android 369

Time for acon – rendring 3D graphics with Irrlicht 370

More on Irrlicht scene management 381

Summary 382

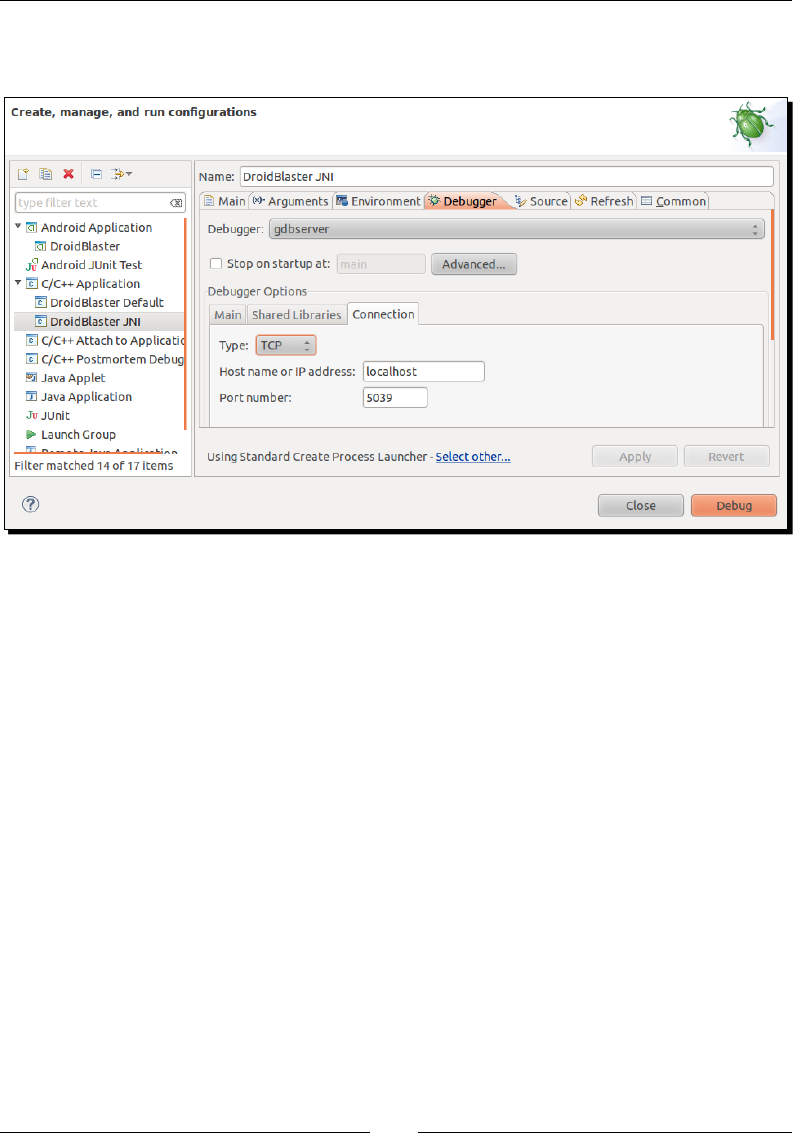

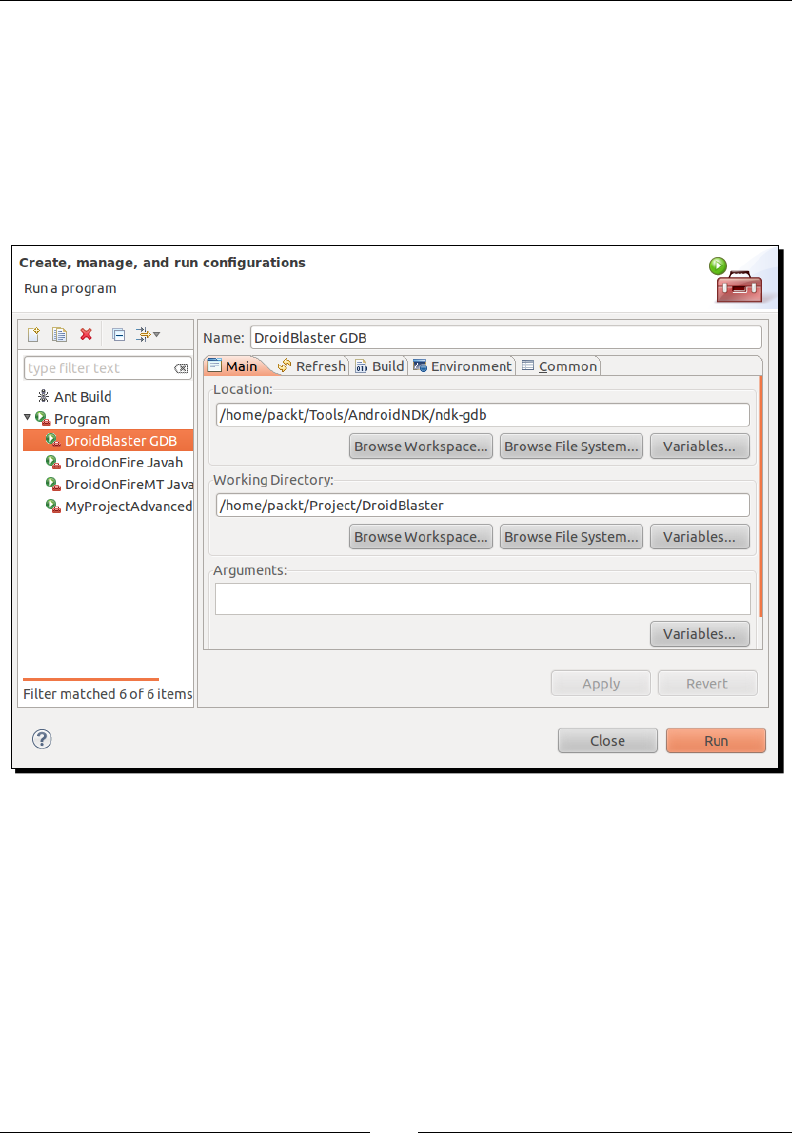

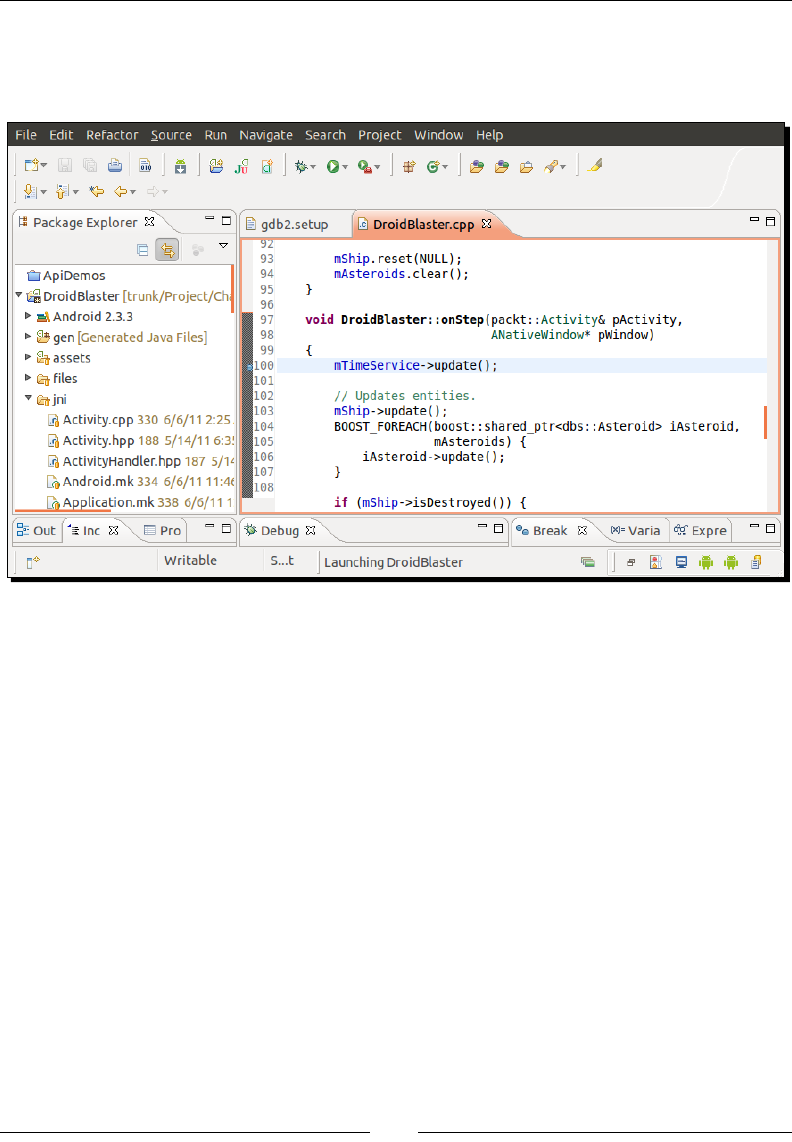

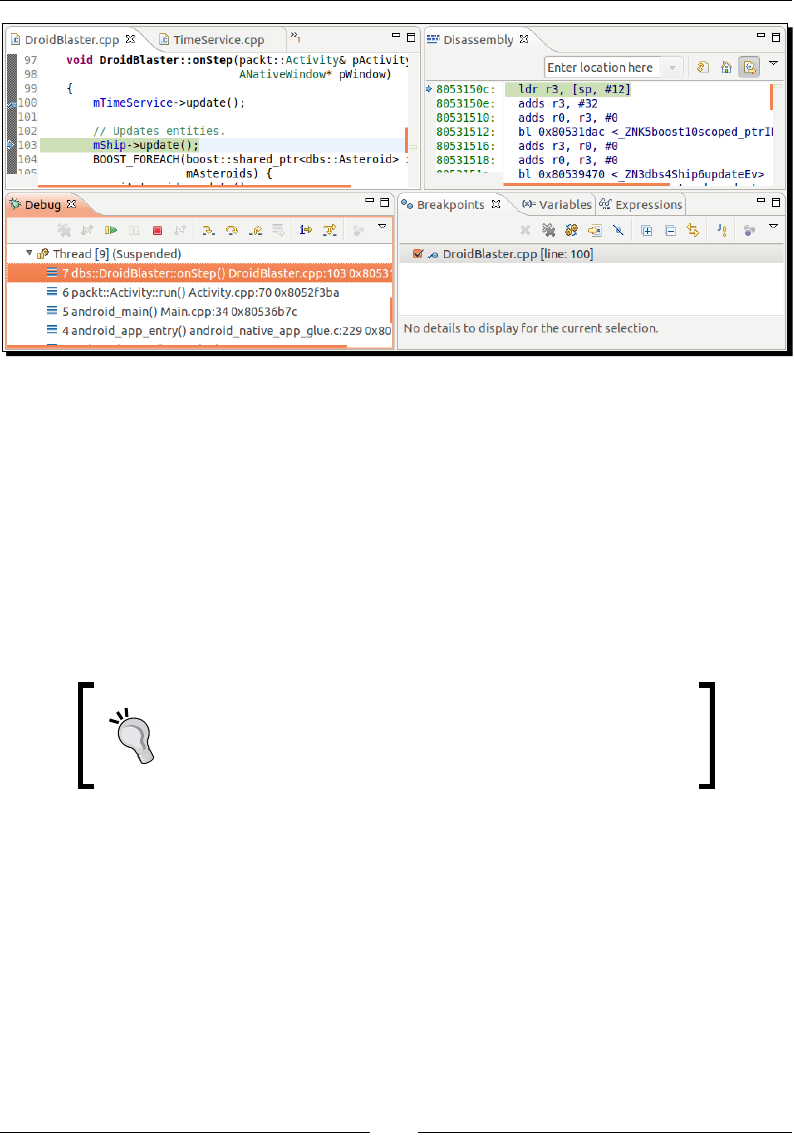

Chapter 11: Debugging and Troubleshoong 383

Debugging with GDB 383

Time for acon – debugging DroidBlaster 384

Stack trace analysis 392

Time for acon – analysing a crash dump 392

More on crash dumps 396

Performance analysis 397

Time for acon – running GProf 398

How it works 403

ARM, thumb, and NEON 403

Summary 405

Index 411

Preface

The short history of compung machines has witnessed some major events, which

forever transformed our usage of technology. From the rst massive main frames to

the democrazaon of personal computers, and then the interconnecon of networks.

Mobility is the next revoluon. Like the primive soup, all the ingredients are now

gathered: an ubiquitous network, new social, professional and industrial usages, a

powerful technology. A new period of innovaon is blooming right now in front of our

eyes. We can fear it or embrace it, but it is here, for good!

The mobile challenge

Today's mobile devices are the product of only a few years of evoluon, from the rst

transportable phones to the new ny high-tech monsters we have in our pocket. The

technological me scale is denitely not the same as the human one.

Only a few years ago, surng on the successful wave of its musical devices, Apple and

its founder Steve Jobs combined the right hardware and the right soware at the right

me not only to sasfy our needs, but to create new ones. We are now facing a new

ecosystem looking for a balance between iOS, Windows Mobile, Blackberry, WebOS, and

more importantly Android! The appete of a new market could not let Google apathec.

Standing on the shoulder of this giant Internet, Android came into the show as the best

alternave to the well established iPhones and other iPads. And it is quickly becoming

the number one.

In this modern Eldorado, new usages or technically speaking, applicaons (acvies, if

you already are an Android adept) sll have to be invented. This is the mobile challenge.

And the dematerialized country of Android is the perfect place to look for. Android is

(mostly) an open source operang system now supported by a large panel of mobile

device manufacturers.

Preface

[ 2 ]

Portability among hardware and adaptability to the constrained resources of mobile devices:

this is the real essence of the mobile challenge from a technical perspecve. With Android,

ones has to deal with mulple screen resoluons, various CPU and GPU speed or capabilies,

memory limitaons, and so on, which are not topics specic to this Linux-based system,

(that is, Android) but can parcularly be incommoding.

To ease portability, Google engineers packaged a virtual machine with a complete framework

(the Android SDK) to run programs wrien in one of the most spread programming language

nowadays: Java. Java, augmented with the Android framework, is really powerful. But rst,

Java is specic to Android. Apple's products are wrien for example in Objecve C and can be

combined with C and C++. And second, a Java virtual machine does not always give you enough

capability to exploit the full power of mobile devices, even with just-in-me compilaon

enabled. Resources are limited on these devices and have to be carefully exploited to oer

the best experience. This is where the Android Nave Development Kit comes into place.

What this book covers

Chapter 1, Seng Up your Environment, covers the tools required to develop an applicaon

with the Android NDK. This chapter also covers how to set up a development environment,

connect your Android device, and congure the Android emulator.

Chapter 2, Creang, Compiling, and Deploying Nave Projects, we will compile, package, and

deploy NDK samples and create our rst Android Java/C hybrid project with NDK and Eclipse.

Chapter 3, Interfacing Java and C/C++ with JNI, presents how Java integrates and

communicates with C/C++ through Java Nave Interface.

Chapter 4, Calling Java Back from Nave Code, we will call Java from C to achieve

bidireconal communicaon and process graphic bitmaps navely.

Chapter 5, Wring a Fully-nave Applicaon, looks into the Android NDK applicaon life-cycle.

We will also write a fully nave applicaon to get rid of Java.

Chapter 6, Rendering Graphics with OpenGL ES, teaches how to display advanced 2D and 3D

graphics at full speed with OpenGL ES. We will inialize display, load textures, draw sprites

and allocate vertex and index buers to display meshes.

Chapter 7, Playing Sound with OpenSL ES, adds a musical dimension to nave applicaons

with OpenSL ES, a unique feature provided only by the Android NDK. We will also record

sounds and reproduce them on the speakers.

Preface

[ 3 ]

Chapter 8, Handling Input Devices and Sensors, covers how to interact with an Android

device through its mul-touch screen. We will also see how to handle keyboard events

navely and apprehend the world through sensors and turn a device into a game controller.

Chapter 9, Porng Exisng Libraries to Android, we will compile the indispensable C/C++

frameworks, STL and Boost. We will also see how to enable excepons and RunTime Type

Informaon. And also port our own or third-party libraries to Android, such as, Irrlicht 3D

engine and Box2D physics engine.

Chapter 10, Towards Professional Gaming, creates a running 3D game controlled with

touches and sensors using Irrlicht and Box2D.

Chapter 11, Debugging and Troubleshoong, provides an in-depth analysis of the running

applicaon with NDK debug ulity. We will also analyze crash dumps and prole the

performance of our applicaon.

What you need for this book

A PC with either Windows or Linux or an Intel-based Mac. As a test machine, an Android device

is highly advisable, although the Android NDK provides an emulator which can sasfy most of

the needs of a hungry developer. But for 2D and 3D graphics, it is sll too limited and slow.

I assume you already understand C and C++ languages, pointers, object-oriented features,

and other modern language concepts. I also assume you have some knowledge about

the Android plaorm and how to create Android Java applicaons. This is not a strong

prerequisite, but preferable. I also guess you are not frighten by command-line terminals.

The version of Eclipse used throughout this book is Helios (3.6).

Finally, bring all your enthusiasm because these lile beasts can become really amazing

when they demonstrate all their potenal and sense of contact.

Who this book is for

Are you an Android Java programmer who needs more performance? Are you a C/C++

developer who doesn't want to bother with Java stu and its out-of-control garbage

collector? Do you want to create fast intensive mulmedia applicaons or games? Answer

yes to any of the above quesons and this book is for you. With some general knowledge

of C/C++ development, you will be able to dive head rst into nave Android development.

Preface

[ 4 ]

Conventions

In this book, you will nd several headings appearing frequently.

To give clear instrucons of how to complete a procedure or task, we use:

Time for action – heading

1. Acon 1

2. Acon 2

3. Acon 3

Instrucons oen need some extra explanaon so that they make sense, so they are

followed with:

What just happened?

This heading explains the working of tasks or instrucons that you have just completed.

You will also nd some other learning aids in the book, including:

Pop quiz – heading

These are short mulple choice quesons intended to help you test your own understanding.

Have a go hero – heading

These set praccal challenges and give you ideas for experimenng with what you

have learned.

You will also nd a number of styles of text that disnguish between dierent kinds of

informaon. Here are some examples of these styles, and an explanaon of their meaning.

Code words in text are shown as follows: "Open a command line window and key in

java –version to check the installaon."

A block of code is set as follows:

export ANT_HOME=`cygpath –u "$ANT_HOME"`

export JAVA_HOME=`cygpath –u "$JAVA_HOME"`

export ANDROID_SDK=`cygpath –u "$ANDROID_SDK"`

export ANDROID_NDK=`cygpath –u "$ANDROID_NDK"`

Preface

[ 5 ]

When we wish to draw your aenon to a parcular part of a code block, the relevant lines

or items are set in bold:

<?xml version="1.0" encoding="utf-8"?>

<manifest xmlns:android="http://schemas.android.com/apk/res/android"

package="com.example.hellojni"

android:versionCode="1"

android:versionName="1.0">

Any command-line input or output is wrien as follows:

$ make –version

New terms and important words are shown in bold. Words that you see on the screen, in

menus or dialog boxes for example, appear in the text like this: "When proposed, include

Devel/make and Shells/bash packages".

Warnings or important notes appear in a box like this.

Tips and tricks appear like this.

Reader feedback

Feedback from our readers is always welcome. Let us know what you think about this

book—what you liked or may have disliked. Reader feedback is important for us to develop

tles that you really get the most out of.

To send us general feedback, simply send an e-mail to feedback@packtpub.com, and

menon the book tle through the subject of your message.

If there is a topic that you have experse in and you are interested in either wring or

contribung to a book, see our author guide on www.packtpub.com/authors.

Customer support

Now that you are the proud owner of a Packt book, we have a number of things to help

you to get the most from your purchase.

Preface

[ 6 ]

Downloading the example code

You can download the example code les for all Packt books you have purchased from your

account at http://www.packtpub.com. If you purchased this book elsewhere, you can

visit http://www.packtpub.com/support and register to have the les e-mailed directly

to you.

Errata

Although we have taken every care to ensure the accuracy of our content, mistakes do

happen. If you nd a mistake in one of our books—maybe a mistake in the text or the

code—we would be grateful if you would report this to us. By doing so, you can save other

readers from frustraon and help us improve subsequent versions of this book. If you

nd any errata, please report them by vising http://www.packtpub.com/support,

selecng your book, clicking on the errata submission form link, and entering the details

of your errata. Once your errata are veried, your submission will be accepted and the

errata will be uploaded to our website, or added to any list of exisng errata, under the

Errata secon of that tle.

Piracy

Piracy of copyright material on the Internet is an ongoing problem across all media. At

Packt, we take the protecon of our copyright and licenses very seriously. If you come

across any illegal copies of our works, in any form, on the Internet, please provide us with

the locaon address or website name immediately so that we can pursue a remedy.

Please contact us at copyright@packtpub.com with a link to the suspected pirated material.

We appreciate your help in protecng our authors, and our ability to bring you

valuable content.

Questions

You can contact us at questions@packtpub.com if you are having a problem with any

aspect of the book, and we will do our best to address it.

Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >

1

Setting Up your Environment

Are you ready to take up the mobile challenge? Is your computer switched on,

mouse and keyboard plugged in, and screen illuminang your desk? Then let’s

not wait a minute more!

In this rst chapter, we are going to do the following:

Download and install the necessary tools to develop applicaons using Android

Set up a development environment

Connect and prepare an Android device for development

Getting started with Android development

What dierenates mankind from animals is the use of tools. Android developers,

this authenc species you are about to belong to, are no dierent!

To develop applicaons on Android, we can use any of the following three plaorms:

Microso Windows PC

Apple Mac OS X

Linux PC

Windows 7, Vista, Mac OS X, and Linux systems are supported in both 32 and 64-bit versions,

but Windows XP in 32-bit mode only. Only Mac OS X computers of version 10.5.8 or later and

based on Intel architectures are supported (not PowerPC processors). Ubuntu is supported

only from version 8.04 (Hardy Heron).

Seng Up your Environment

[ 8 ]

Right, this is a good start but unless you are able to read and write binary language like English,

having an OS is not enough. We also need soware dedicated to Android development:

The JDK (Java Development Kit)

The Android SDK (Soware Development Kit)

The Android NDK (Nave Development Kit)

An IDE (Integrated Development Environment): Eclipse

Android, and more specically Android NDK compilaon system is heavily based on Linux.

So we also need to set up some ulies by default, and we need to install one environment

that supports them: Cygwin (unl NDK R7). This topic is covered in detail later in the chapter.

Finally, a good old command-line Shell to manipulate all these ulies is essenal: we will

use Bash (the default on Cygwin, Ubuntu, and Mac OS X).

Now that we know what tools are necessary to work with Android, let’s start with the

installaon and setup process.

The following secon is dedicated to Windows. If you are a Mac or Linux

user, you can immediately jump to the Seng up Mac OS X or the

Seng up Linux secon.

Setting up Windows

Before installing the necessary tools, we need to set up Windows to host our Android

development tools properly.

Time for action – preparing Windows for Android development

To work with the Android NDK, we need to set up a Cygwin Linux-like environment

for Windows:

Since NDK R7, Cygwin installaon is not required anymore

(steps 1 to 9). The Android NDK provides addional nave Windows

binaries (for example, ndk-build.cmd).

1. Go to http://cygwin.com/install.html.

2. Download setup.exe and execute it.

3. Select Install from Internet.

Chapter 1

[ 9 ]

4. Follow the wizard screens.

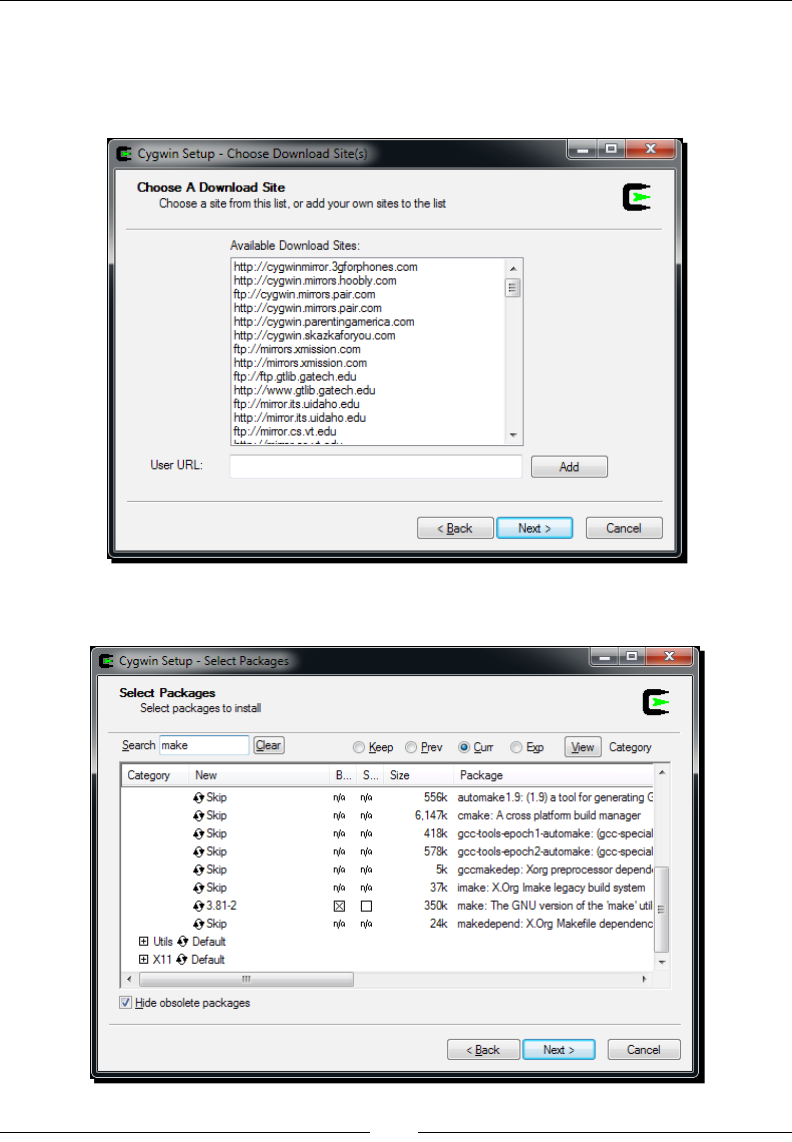

5. Select a download site from where Cygwin packages are going to be downloaded.

Consider using a server in your country:

6. When proposed, include Devel/make and Shells/bash packages:

Seng Up your Environment

[ 10 ]

7. Follow the installaon wizard unl the end. This may take some me depending

on your Internet connecon.

8. Aer installaon, launch Cygwin. Your prole les get created on rst launch.

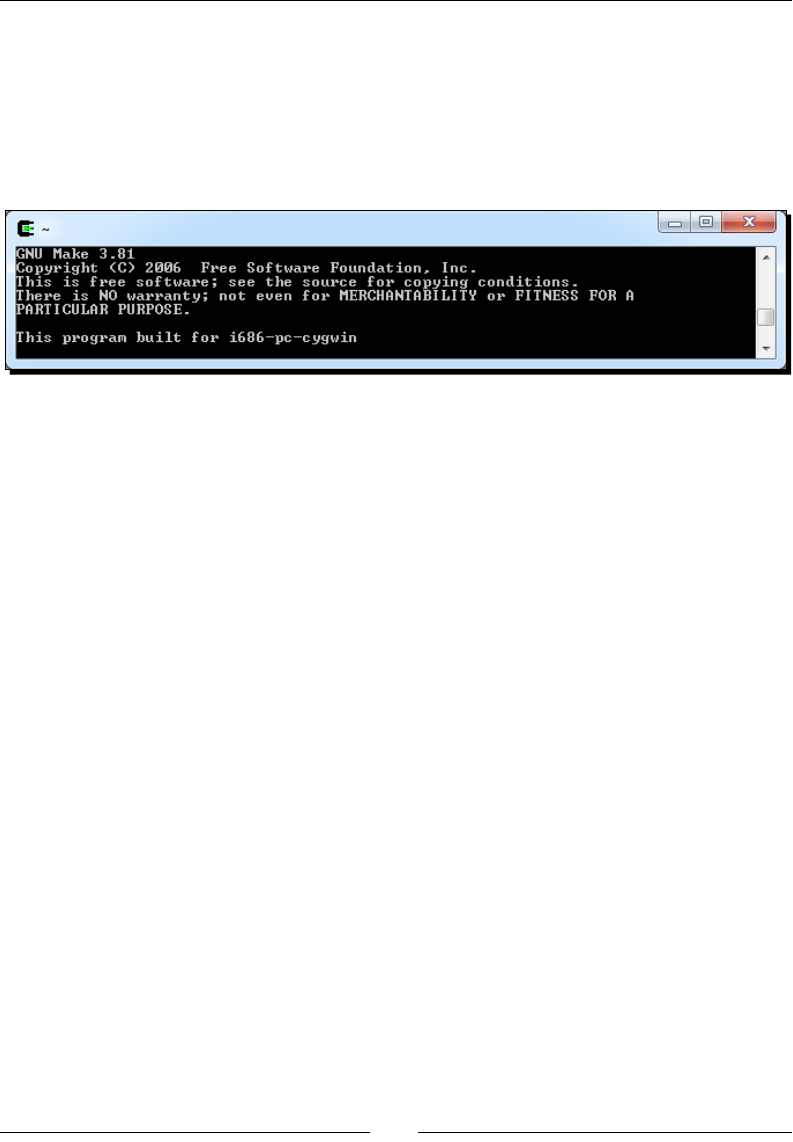

9. Enter the following command to check if Cygwin works:

$ make –version

To run Eclipse and allow compilaon of Android Java code to bytecode, a Java Development

Kit is required. On Windows, the obvious choice is the Oracle Sun JDK:

1. Go to the Oracle website and download the latest Java Development Kit: http://

www.oracle.com/technetwork/java/javase/downloads/index.html.

2. Launch the downloaded program and follow the installaon wizard. At the end

of the installaon, a browser is opened asking for JDK registraon. This step is

absolutely not compulsory and can be ignored.

3. To make sure the newly installed JDK is used, let’s dene its locaon in environment

variables. Open the Windows Control panel and go to the System panel (or right-

click on Computer item in the Windows Start menu and select Properes). Then go

to Advanced system sengs. The System Properes window appears. Finally, select

Advanced tab and click on the Environment Variables buon.

4. In the Environment Variables window, inside the System variables list, insert the

JAVA_HOME variable with JDK installaon directory as value and validate. Then

edit PATH (or Path) and insert the %JAVA_HOME%\bin directory before any other

directory and separate it with a semicolon. Validate and close the window.

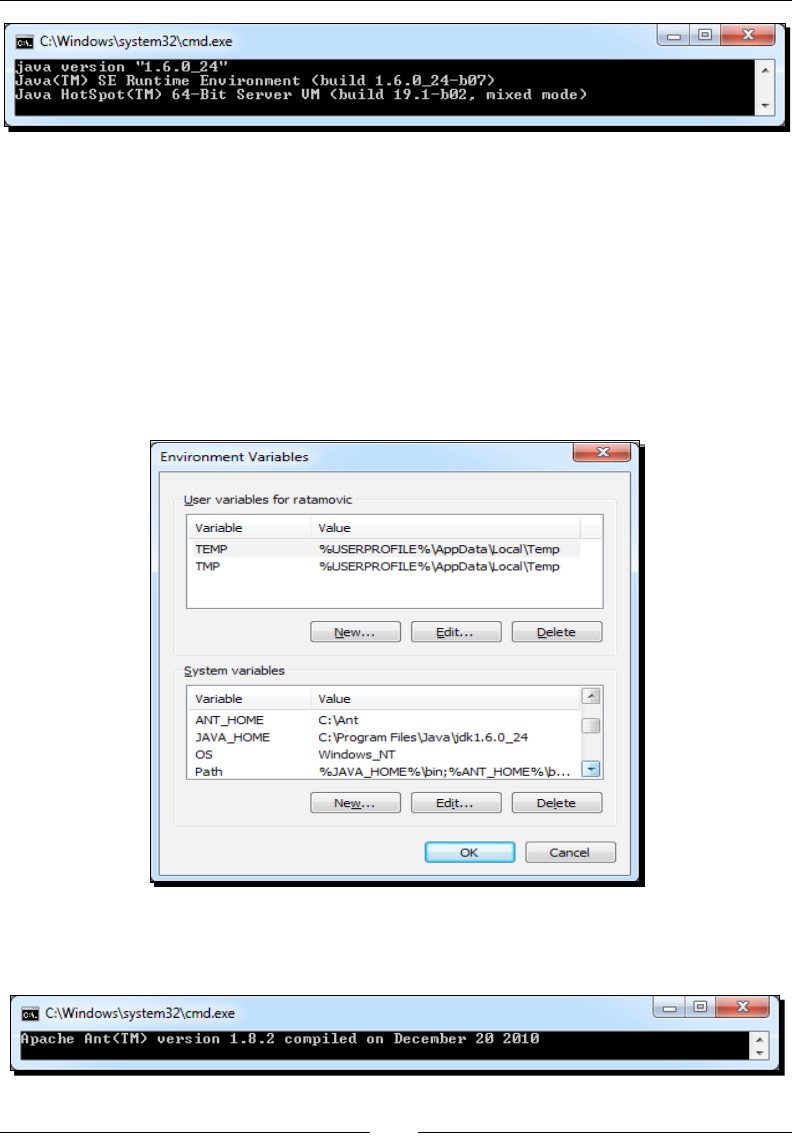

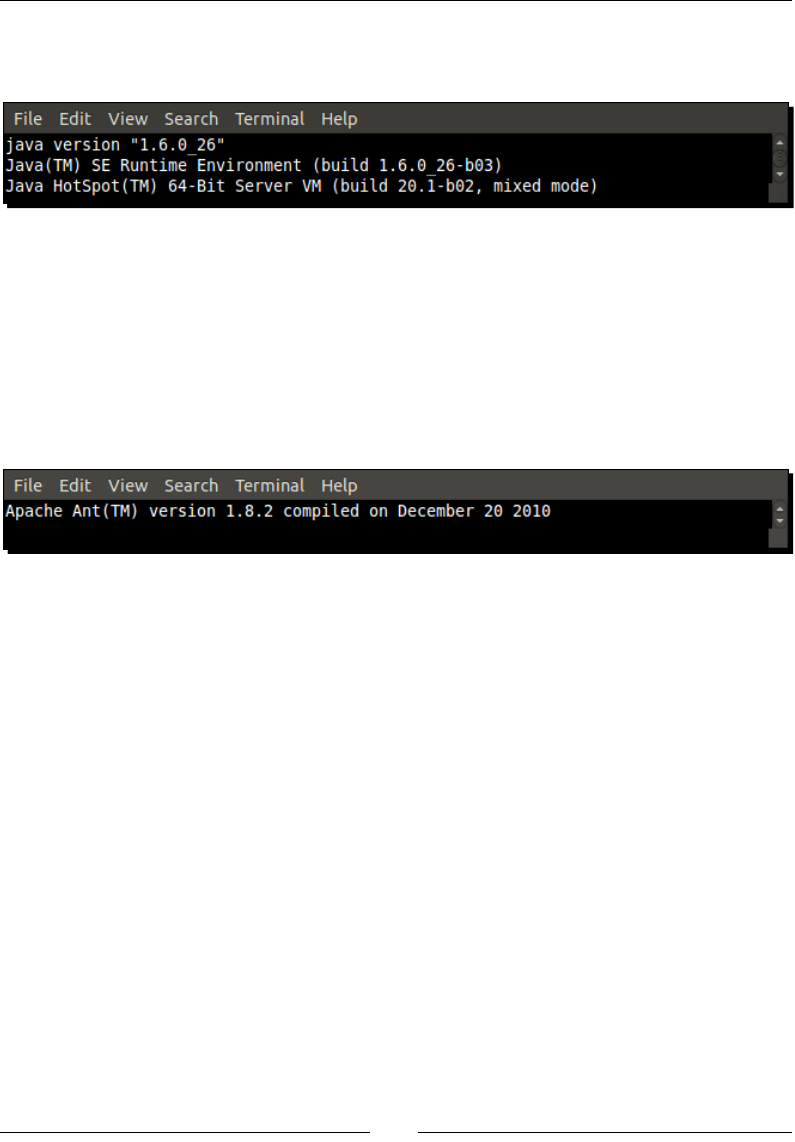

5. Open a command-line window and key in java –version to check the installaon.

The result should be similar to the following screenshot. Check carefully to make

sure that the version number corresponds to the version of the newly installed JDK:

$ java –version

Chapter 1

[ 11 ]

To compile projects from the command line, the Android SDK supports Ant—a Java-based

build automaon ulity. Let’s install it:

1. Go to http://ant.apache.org/bindownload.cgi and download Ant binaries,

packed within a ZIP archive.

2. Unzip Ant in the directory of your choice (for example, C:\Ant).

3. Go back to the Environment Variables window, as in step 12, and create the

ANT_HOME variable with the Ant directory as the value. Append the %ANT_HOME%\

bin directory to PATH:

4. From a classic Windows terminal, check the Ant version to make sure it is

properly working:

Seng Up your Environment

[ 12 ]

What just happened?

We have prepared Windows with the necessary underlying ulies to host Android

development tools: Cygwin and Java Development Kit.

Cygwin is an open source soware collecon that allows the Windows plaorm to emulate

a Unix-like environment. It aims at navely integrang soware based on POSIX standard

(such as Unix, Linux, and so on) into Windows. It can be considered as an intermediate layer

between applicaons originated from Unix/Linux (but navely recompiled on Windows) and

the Windows OS itself.

We have also deployed a Java Development Kit in version 1.6 and checked if it is properly

working from the command line. Because Android SDK uses generics, the JDK in version 1.5

is the least required when developing with Android. JDK is simple to install on Windows but

it is important to make sure a previous installaon, such as JRE (Java Runme Environment,

which aims at execung applicaons but not developing them) is not interfering. This is why

we have dened JAVA_HOME and PATH environment variables to ensure proper JDK is used.

Finally, we have installed Ant ulity that we are going to use in the next chapter to build

projects manually. Ant is not required for Android development but is a very good soluon

to set up a connuous integraon chain.

Where is Java’s home?

Dening the JAVA_HOME environment variable is not required. However,

JAVA_HOME is a popular convenon among Java applicaons, Ant being one

of them. It rst looks for the java command in JAVA_HOME (if dened)

before looking in PATH. If you install an up-to-date JDK in another locaon

later on, do not forget to update JAVA_HOME.

Installing Android development kits on Windows

Once JDK is installed on our system, we can start installing Android SDK and NDK to create,

compile, and debug Android programs.

Chapter 1

[ 13 ]

Time for action – installing Android SDK and NDK on Windows

1. Open your Web browser and go to http://developer.android.com/sdk.

This web page lists all available SDKs, one for each plaorm.

2. Download Android SDK for Windows, packaged as an Exe installer.

3. Then, go to http://developer.android.com/sdk/ndk and download the

Android NDK (not SDK!) for Windows, packaged as a ZIP archive this me.

4. Execute Android SDK installer. Select an appropriate installaon locaon (for example,

C:\Android\android-sdk), knowing that Android SDK and NDK together can take

more than 3 GB of disk space (currently!) with all ocial API versions installed. As a

precauon, avoid leaving any space in the target installaon path.

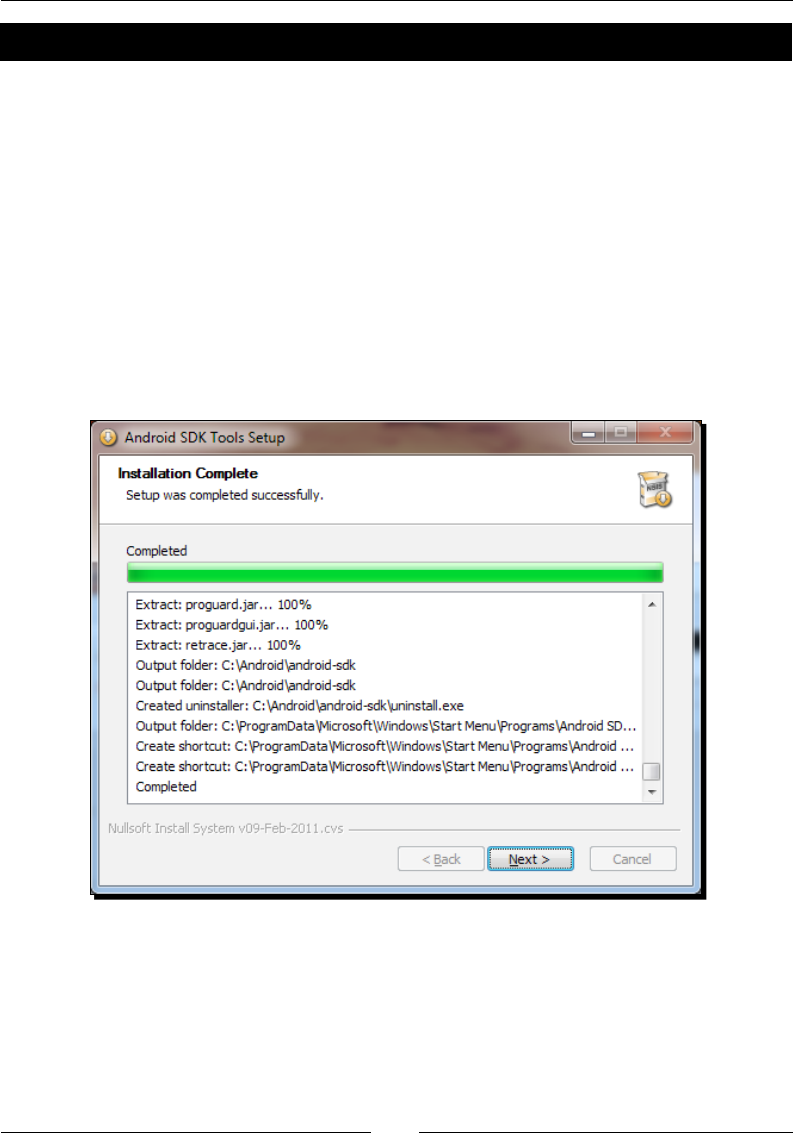

5. Follow the installaon wizard unl the end. Check the Start SDK Manager:

6. The Android SDK and AVD Manager is launched. The Package installaon window

appears automacally.

Seng Up your Environment

[ 14 ]

7. Check the Accept All opon and click on Install to start the installaon of

Android components:

8. Aer a few minutes, all packages get downloaded and a message asking to restart

ADB service (the Android Debug Bridge) appears. Validate by clicking on Yes.

9. Close the applicaon.

10. Now, unzip Android NDK archive into its nal locaon (for example, C:\Android\

android-ndk). Again, avoid leaving any space in the installaon path (or some

problems could be encountered with Make).

To easily access Android ulies from the command line, let’s dene the

environment variables:

11. Open the Environment Variables system window, as we did in the previous part.

Inside the System variables list, insert the ANDROID_SDK and ANDROID_NDK

variables with the corresponding directories as values.

12. Append %ANDROID_SDK%\tools, %ANDROID_SDK%\platform-tools and

%ANDROID_NDK%, all separated by a semicolon, to your PATH.

Chapter 1

[ 15 ]

13. All the Windows environment variables should be imported automacally by Cygwin

when launched. Let’s verify this by opening a Cygwin terminal and checking whether

NDK is available:

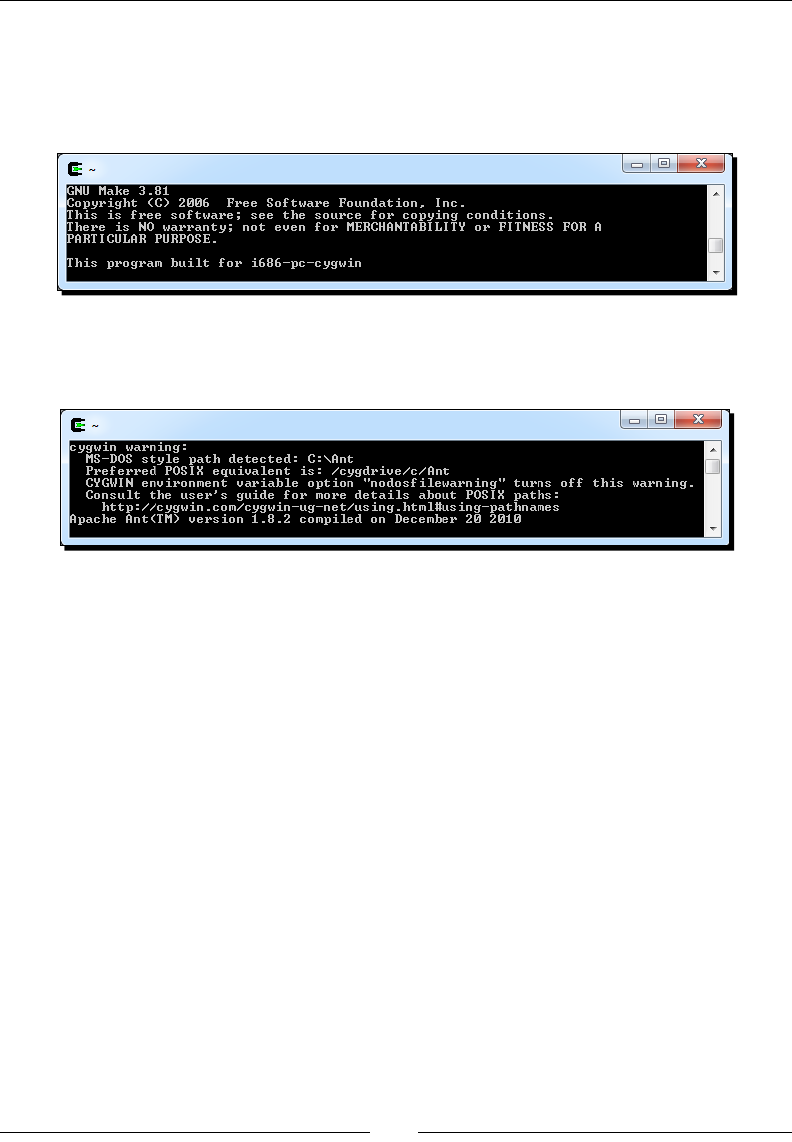

$ ndk-build –-version

14. Now, check the Ant version to make sure it is properly working on Cygwin:

$ ant -version

The rst me Cygwin should emit a surprising warning: paths are in MS-DOS style

and not POSIX. Indeed, Cygwin paths are emulated and should look similar to /

cygdrive/<Drive letter>/<Path to your directory with forward

slashes>. For example, if Ant is installed in c:\ant, then the path should be

indicated as /cygdrive/c/ant.

15. Let’s x this. Go to your Cygwin directory. There, you should nd a directory named

home/<your user name> containing a .bash_profile. Open it in edion.

16. At the end of the script, translate the Windows environment variables into

Cygwin variables with the cygpath ulity. PATH does not need to be translated as

this essenal variable is processed automacally by Cygwin. Make sure to use the

prime character (`) (to execute a command inside another), which has a dierent

meaning than the apostrophe (‘) (to dene a variable) with Bash. An example

.bash_profile is provided with this book:

export ANT_HOME=`cygpath –u “$ANT_HOME”`

export JAVA_HOME=`cygpath –u “$JAVA_HOME”`

export ANDROID_SDK=`cygpath –u “$ANDROID_SDK”`

export ANDROID_NDK=`cygpath –u “$ANDROID_NDK”`

Seng Up your Environment

[ 16 ]

17. Reopen a Cygwin window and check the Ant version again. No warning is issued

this me:

$ ant -version

What just happened?

We have downloaded and deployed both Android SDK and NDK and made them available

through command line using environment variables.

We have also launched the Android SDK and AVD manager, which aims at managing SDK

components installaon, updates, and emulaon features. This way, new SDK API releases

as well as third-party components (for example, Samsung Galaxy Tablet emulator, and so

on) are made available to your development environment without having to reinstall the

Android SDK.

If you have trouble connecng at step 7, then you may be located behind a proxy. In this

case, Android SDK and AVD manager provide a Sengs secon where you can specify your

proxy sengs.

At step 16, we have converted the Windows paths dened inside the environment variables

into Cygwin paths. This path form, which may look odd at rst, is used by Cygwin to emulate

Windows paths as if they were Unix paths. Cygdrive is similar to a mount or media directory

on Unix and contains every Windows drive as a plugged le system.

Cygwin paths

The rule to remember while using paths with Cygwin is that they must

contain forward slashes only and the drive leer is replaced by /cygdrive/

[Drive Letter]. But beware, le names in Windows and Cygwin are

case-sensive, contrary to real Unix systems.

Chapter 1

[ 17 ]

Like any Unix system, Cygwin has a root directory named slash (/). But since there is no real

root directory in Windows, Cygwin emulates it in its own installaon directory. In a Cygwin

command line, enter the following command to see its content:

$ ls /

These les are the ones located in your Cygwin directory (except /proc, which is an

in-memory directory). This explains why we updated .bash_profile in the home

directory itself, which is located inside the Cygwin directory.

Ulies packaged with Cygwin usually expect Cygwin-style paths, although Windows-style

paths work most of the me. Thus, although we could have avoided the conversion in

.bash_profile (at the price of a warning), the natural way to work with Cygwin and avoid

future troubles is to use Cygwin paths. However, Windows ulies generally do not support

Cygwin paths (for example, java.exe), in which case, an inverse path conversion is required

when calling them. To perform conversion, cygpath ulity provides the following opons:

-u: To convert Windows paths to Unix paths

-w: To convert Unix paths to Windows paths

-p: To convert a list of paths (separated by ; on Windows and : on Unix)

Sll at step 17, you may have some dicules when eding .bash_profile: some weird

square characters may appear and the enre text is on one very long line! This is because it

is encoded using Unix encoding. So use a Unix compable le editor (such as Eclipse, PSPad,

or Notepad++) when eding Cygwin les. If you already got into trouble, you can use either

your editor End-Of-Line conversion feature (Notepad++ and PSPad provide one) or apply

command-line dos2unix ulity (provided with Cygwin) on the incriminated le.

Seng Up your Environment

[ 18 ]

Char return on Cygwin

Unix les use a simple line-feed character (beer known

as \n) to indicate an end of line whereas Windows uses a

carriage return (CR or \r) plus a line feed. MacOS, on the

other hand, uses a carriage return only. Windows newline

markers can cause lots of trouble in Cygwin Shell scripts,

which should be kept in Unix format.

This is the end of the secon dedicated to Windows setup.

If you are not a Mac or Linux user, you can jump to the

Seng up Eclipse development environment secon.

Setting up Mac OS X

Apple computers and Mac OS X have a reputaon for being simple and easy to use. And

honestly, this adage is rather true when it comes to Android development. Indeed, Mac OS X

is based on Unix, well adapted to run the NDK toolchain, and a recent JDK is already installed

by default. Mac OS X comes with almost anything we need with the excepon of Developer

Tools, which need to be installed separately. These Developer Tools include XCode IDE, many

Mac development ulies, and also some Unix ulies, such as Make and Ant.

Time for action – preparing Mac OS X for Android development

All developer tools are included in XCode installaon package (version 4, at the me this

book was wrien). There exist four soluons to get this package, and they are as follows:

If you have Mac OS X installaon media, open it and look for the XCode installaon

package

XCode is also provided on the AppStore for free (but this has changed recently and

may change in the future too)

XCode can also be downloaded from the Apple website with a paying program

subscripon at the address http://developer.apple.com/xcode/

Older version 3, compable with Android development tools, is available for free

as a disc image from the same page with a free Apple Developer account

Using the most appropriate soluon for your case, let’s install XCode:

1. Find your XCode installaon package and run it. Select the UNIX Development

opon when the customizaon screen appears. Finish installaon. We are done!

Chapter 1

[ 19 ]

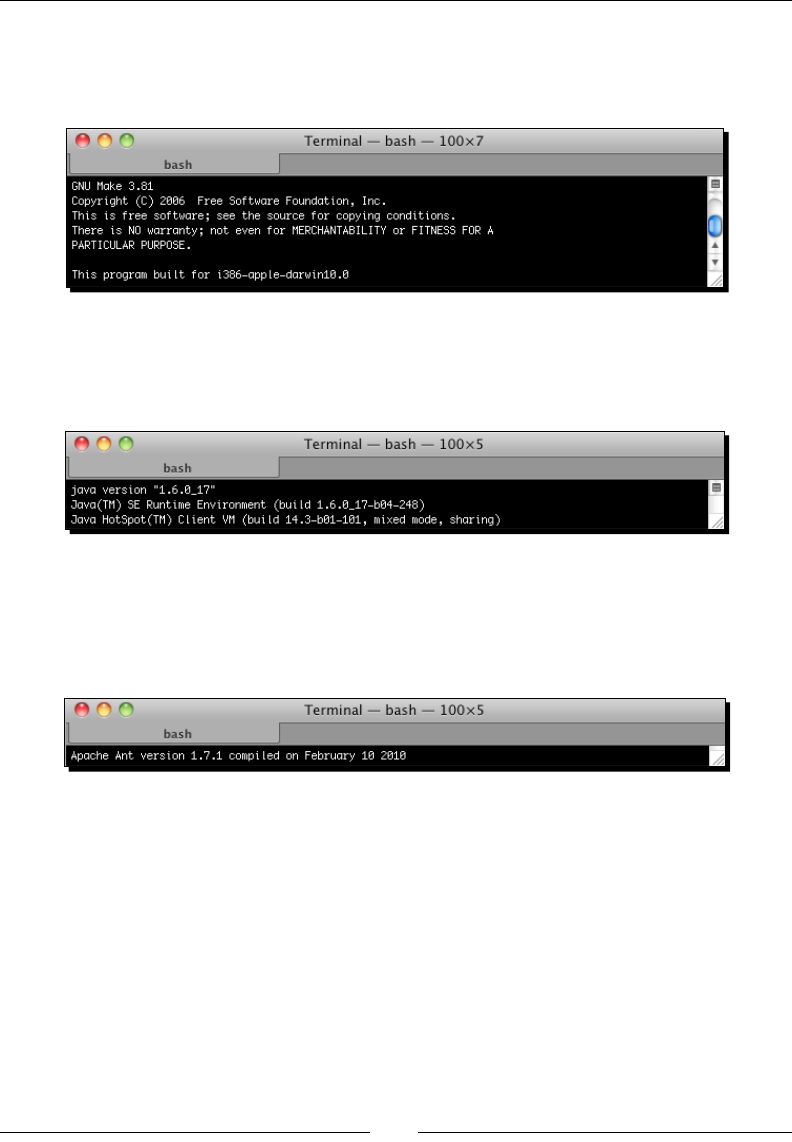

2. To develop with Android NDK, we need the Make build tool for nave code. Open a

terminal prompt and ensure Make correctly works:

$ make --version

3. To run Eclipse and allow compilaon of Android Java code to bytecode, Java

Development Kit is required. Let’s check if the default Mac OS X JDK works ne:

$ java –version

4. To compile projects from the command line, the Android SDK supports Ant,

a Java-based build automaon ulity. Sll in a terminal, ensure Ant is

correctly installed:

$ ant –version

What just happened?

We have prepared our Mac OS X to host Android development tools. And as usual with

Apple, that was rather easy!

We have checked if Java Development Kit in version 1.6 is properly working from the

command line. Because Android SDK uses generics, a JDK in version 1.5 is the least

required for Android development.

We have installed Developer Tools, which include Make—to run the NDK compiler—and

Ant—that we are going to use in the next chapter to build projects manually. Ant is not

required for Android development but is a very good soluon to set up a connuous

integraon chain.

Seng Up your Environment

[ 20 ]

Installing Android development kits on Mac OS X

Once a JDK is installed on your system, we can start installing Android Development SDK

and NDK to create, compile, and debug Android programs.

Time for action – installing Android SDK and NDK on Mac OS X

1. Open your web browser and go to http://developer.android.com/sdk.

This web page lists all available SDKs, one for each plaorm.

2. Download Android SDK for Mac OS X, which is packaged as a ZIP archive.

3. Then, go to http://developer.android.com/sdk/ndk and download the

Android NDK (not SDK!) for Mac OS X, packaged as a Tar/BZ2 archive this me.

4. Uncompress the downloaded archives separately into the directory of your choice

(for example, /Developer/AndroidSDK and /Developer/AndroidNDK).

5. Let’s declare these two directories as environment variables. From now on, we will

refer to these directories as $ANDROID_SDK and $ANDROID_NDK throughout this

book. Assuming you use the default Bash command-line shell, create or edit your

.prole le (be careful, this is a hidden le!) in your home directory and add the

following variables:

export ANDROID_SDK=”<path to your Android SDK directory>”

export ANDROID_NDK=”<path to your Android NDK directory>”

export PATH=”$PATH:$ANDROID_SDK/tools:$ANDROID_SDK/platform-

tools:$ANDROID_NDK”

Downloading the example code

You can download the example code les for all Packt books you have

purchased from your account at hp://www.PacktPub.com. If you

purchased this book elsewhere, you can visit hp://www.PacktPub.com/

support and register to have the les e-mailed directly to you.

6. Save the le and log out from your current session.

7. Log in again and open a terminal. Enter the following command:

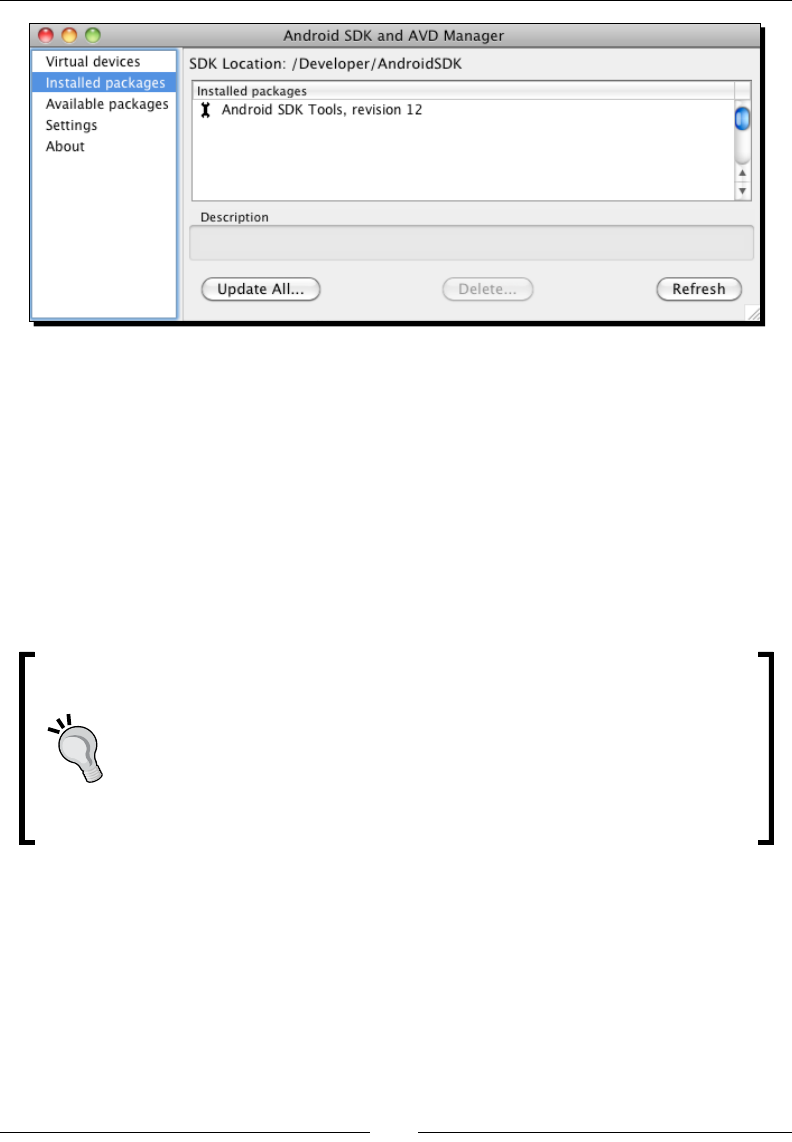

$ android

8. The Android SDK and AVD Manager window shows up.

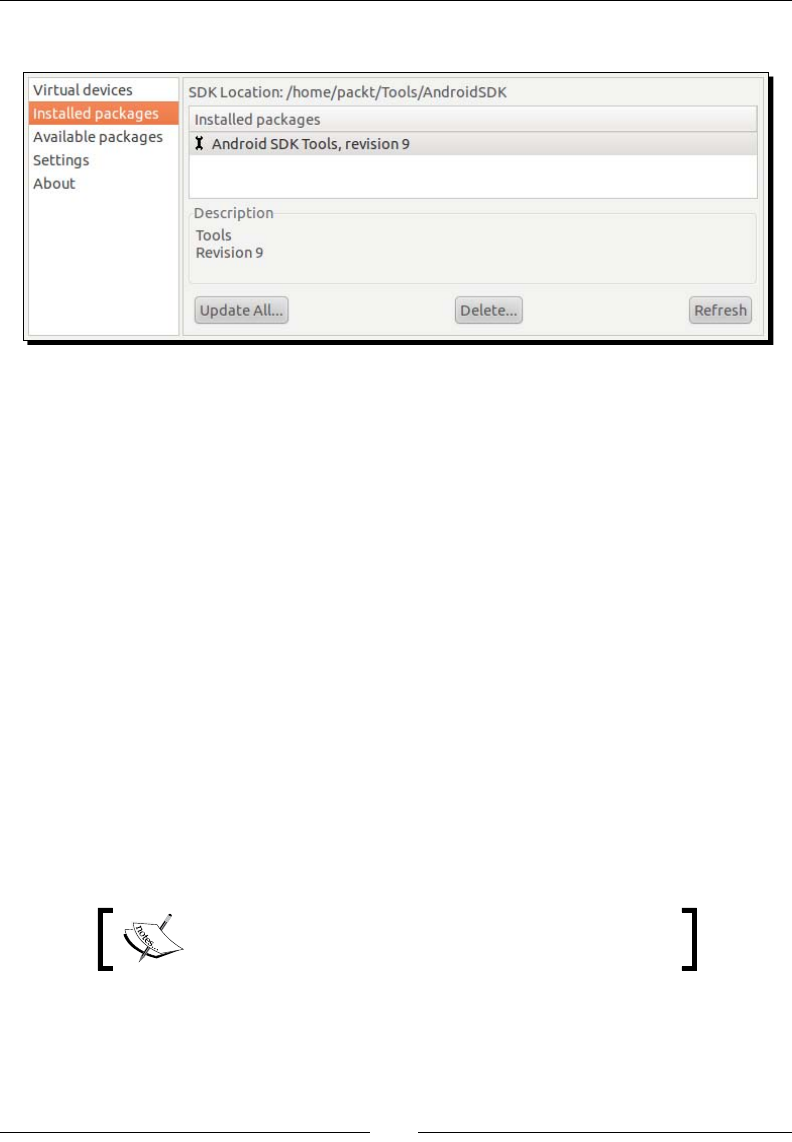

9. Go to the Installed packages secon and click on Update All:

Chapter 1

[ 21 ]

10. A package selecon dialog appears. Select Accept All and then Install.

11. Aer few minutes, all packages get downloaded and a message asking to restart

ADB service (the Android Debug Bridge) appears. Validate by clicking on Yes.

12. You can now close the applicaon.

What just happened?

We have downloaded and deployed both Android SDK and NDK and made them available

through the command line using environment variables.

Mac OS X and environment variables

Mac OS X is tricky when it comes to environment variables. They can be easily

declared in a .profile for applicaons launched from a terminal, as we just

did. They can also be declared using an environment.plist le for GUI

applicaons, which are not launched from Spotlight. A more powerful way to

congure them is to dene or update /etc/launchd.conf system le (see

http://developer.apple.com/).

We have also launched the Android SDK and AVD manager, which aims at managing the

installaon, updates, and emulaon features of the SDK components. This way, new SDK API

releases as well as third-party components (for example, Samsung Galaxy Tablet emulator,

and so on) are made available to your development environment without having to reinstall

the Android SDK.

Seng Up your Environment

[ 22 ]

If you have trouble connecng at step 9, then you may be located behind a proxy. In this

case, Android SDK and AVD manager provide a Sengs secon where you can specify your

proxy sengs.

This is the end of the secon dedicated to Mac OS X setup. If you are

not a Linux user, you can jump to the Seng up Eclipse development

environment secon.

Setting up Linux

Although Linux is more naturally suited for Android development, as the Android toolchain is

Linux-based, some setup is necessary as well.

Time for action – preparing Ubuntu Linux for

Android development

To work with Android NDK, we need to check and install some system packages and ulies:

1. First, Glibc (the GNU C standard library, in version 2.7 or later) must be installed. It is

usually shipped with Linux systems by default. Check its version using the following

command:

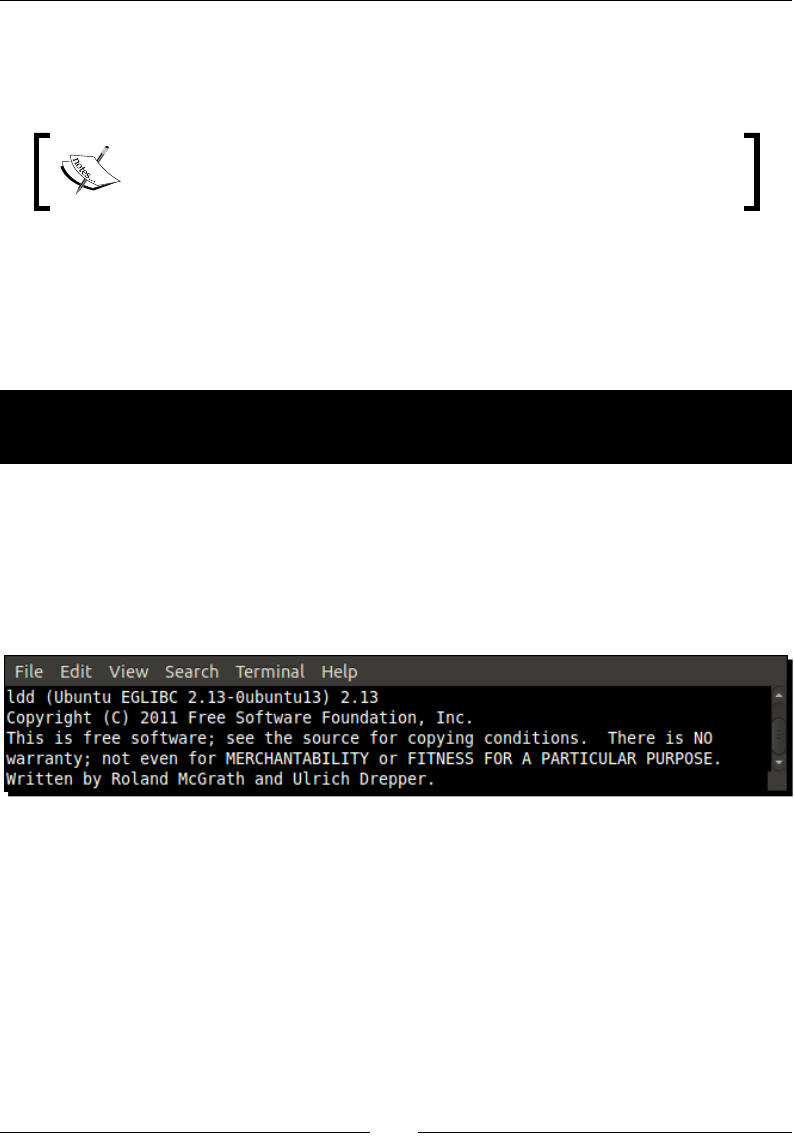

$ ldd -–version

2. We also need the Make build tool for nave code. Installaon can be performed

using the following command:

$ sudo apt-get install build-essential

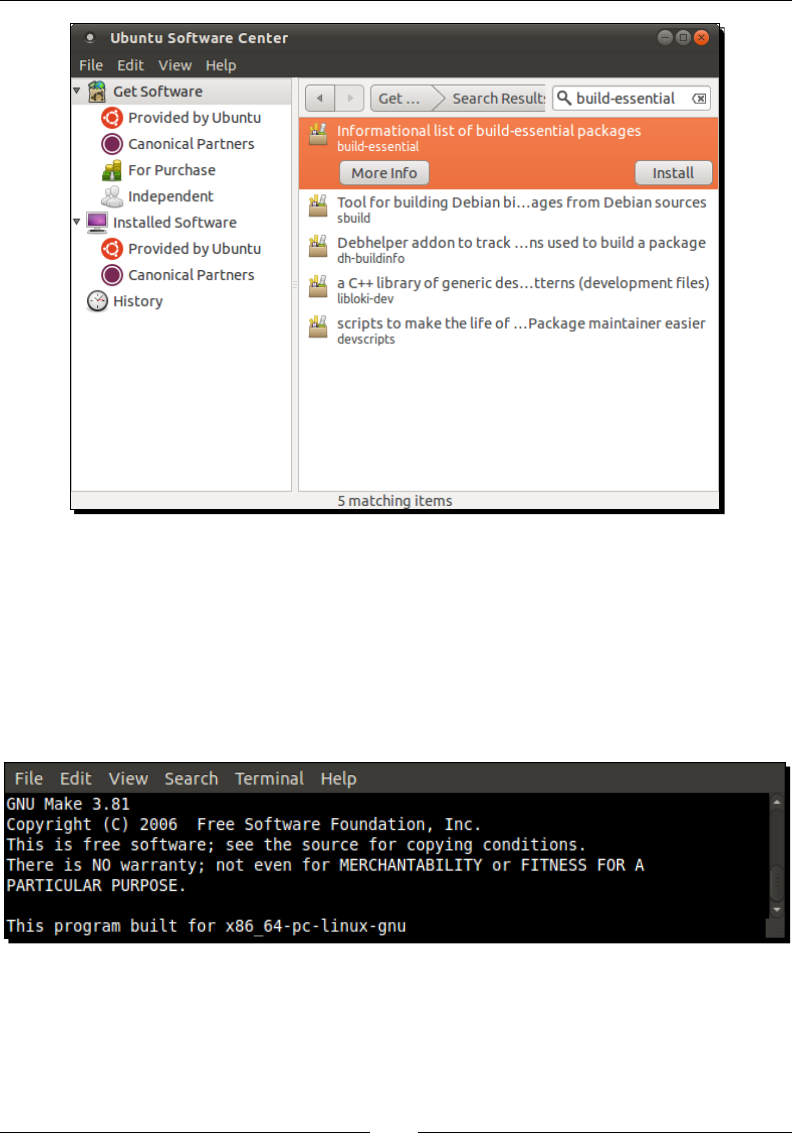

Alternavely, Make can be installed through Ubuntu Soware Center. Look for

build-essenal in the dedicated search box and install the packages found:

Chapter 1

[ 23 ]

Package build-essential contains a minimal set of tools for compilaon and

packaging on Linux Systems. It also includes GCC (the GNU C Compiler), which is not

required for standard Android development as Android NDK already packages its

own version.

3. To ensure that Make is correctly installed, type the following command. If correctly

installed, the version will be displayed:

$ make --version

Seng Up your Environment

[ 24 ]

Special note for 64-bit Linux owner

We also need 32-bit libraries installed to avoid compability problems. This can

be done using the following command (to execute in a command-line prompt)

or again the Ubuntu Soware Center:

sudo apt-get install ia32-libs

To run Eclipse and allow compilaon of Android Java code to bytecode, Java Development Kit

is required. We need to download and install Oracle Sun Java Development Kit. On Ubuntu,

this can be performed from the Synapc Package Manager:

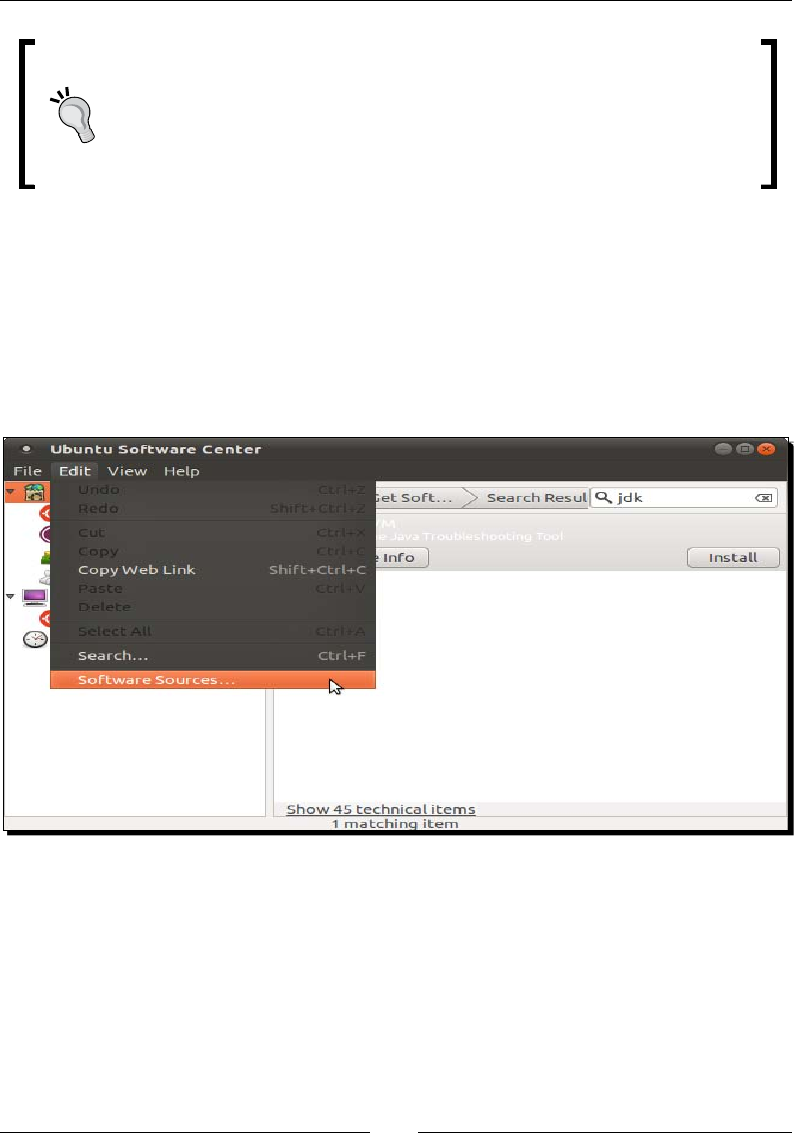

1. Open Ubuntu System/Administraon menu and select Synapc Package Manager

(or open your Linux package manager if you use another Linux distros).

2. Go to the Edit | Soware Sources menu.

3. In the Soware Sources dialog, open the Other Soware tab.

4. Check the Canonical Partners line and close the dialog:

Chapter 1

[ 25 ]

5. Package cache synchronizes automacally with the Internet, and aer a few seconds

or minutes some new soware is made available in the Canonical Partners secon.

6. Find Sun Java™ Development Kit (JDK) 6 (or later) and click on Install. You are

also advised to install Lucida TrueType fonts (from the Sun JRE), the Java(TM)

Plug-in packages.

7. Accept the license (aer reading it carefully of course!). Be careful as it may open

in the background.

8. When installaon is nished, close Ubuntu Soware Center.

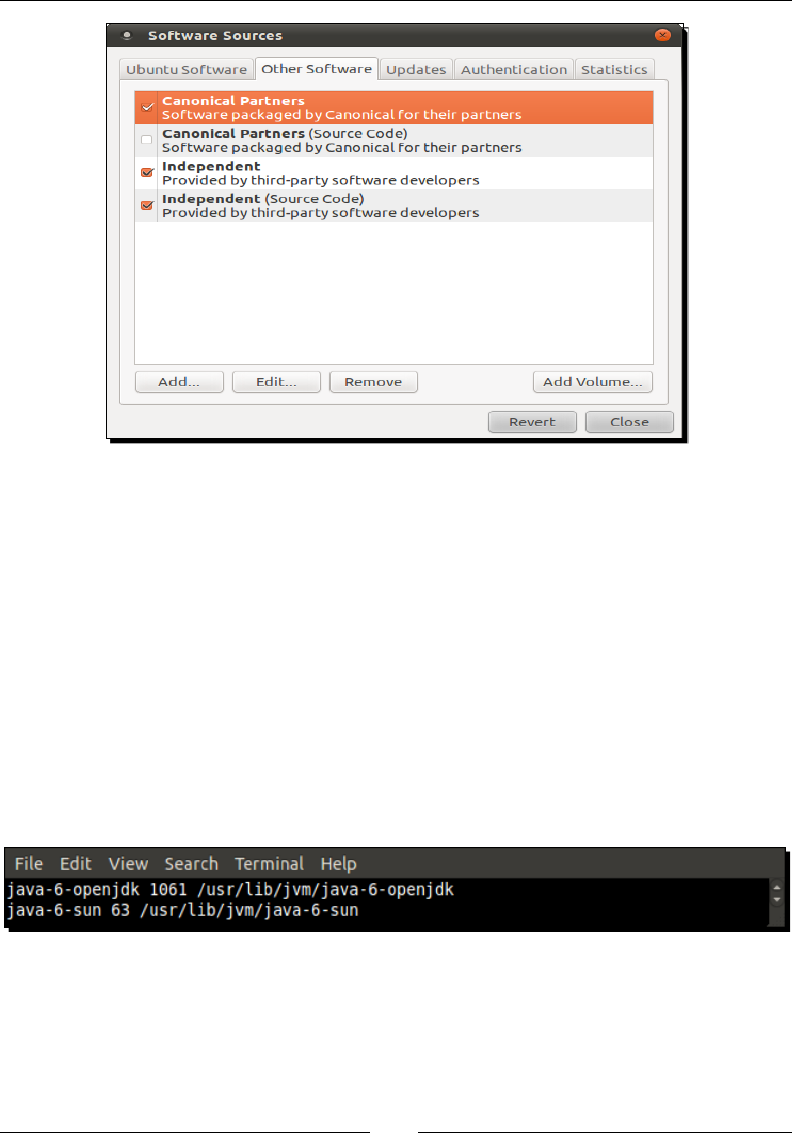

9. Although Sun JDK is now installed, it is not yet available. Open JDK is sll used by

default. Let’s acvate Sun JRE through the command line. First, check available JDK:

$ update-java-alternatives –l

10. Then, acvate the Sun JRE using the idener returned previously:

$ sudo update-java-alternatives –s java-6-sun

Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >

Seng Up your Environment

[ 26 ]

11. Open a terminal and check that installaon is OK by typing:

$ java –version

The Android SDK supports Ant, a Java-based build automaon ulity, to compile projects

from the command line. Let’s install it.

1. Install Ant with the following command or with the Ubuntu Soware Center:

$ sudo apt-get install ant

2. Check whether Ant is properly working:

$ ant --version

What just happened?

We have prepared our Linux operang system with the necessary ulies to host Android

development tools.

We have installed a Java Development Kit in version 1.6 and checked if it is properly working

from the command line. Because Android SDK uses generics, the JDK in version 1.5 is the

least required for Android development.

You may wonder why we bothered with the installaon of Sun JDK while Open JDK is already

ready to use. The reason is simply that Open JDK is not ocially supported by Android SDK.

If you want to avoid any possible interacon with Open JDK, think about removing it enrely

from your system. Go to the Provided by Ubuntu secon in the Ubuntu Soware Center and

click on Remove for each OpenJDK line. For more informaon, look for the ocial Ubuntu

documentaon: http://help.ubuntu.com/community/Java.

Chapter 1

[ 27 ]

Finally, we have installed Ant ulity that we are going to use in the next chapter to build

projects manually. Ant is not required for Android development but is a very good soluon

to set up a connuous integraon chain.

There is no more Sun JDK on Linux repositories since Java 7.

The Open JDK becomes the ocial Java implementaon.

Installing Android development kits on Linux

Once JDK is installed on your system, we can start installing Android Development SDK and

NDK to create, compile, and debug Android programs.

Time for action – installing Android SDK and NDK on Ubuntu

1. Open your web browser and go to http://developer.android.com/sdk.

This web page lists all available SDKs, one for each plaorm.

2. Download Android SDK for Linux, which is packaged as a Tar/GZ archive.

3. Then, go to http://developer.android.com/sdk/ndk and download the

Android NDK (not SDK!) for Linux, packaged as a Tar/BZ2 archive this me.

4. Uncompress the downloaded archives separately into the directories of your choice

(for example, ~/AndroidSDK and ~/AnroidNDK). On Ubuntu, you can use Archive

Manager (right-click on the archive le and Extract Here).

5. Let’s declare these two directories as environment variables. From now on, we

will refer to these directories as $ANDROID_SDK and $ANDROID_NDK throughout

this book. Assuming you use a Bash command-line shell, edit your .prole le

(be careful, this is a hidden le!) in your home directory and add the following

variables:

export ANDROID_SDK=”<path to your Android SDK directory>”

export ANDROID_NDK=”<path to your Android NDK directory>”

export PATH=”$PATH:$ANDROID_SDK/tools:$ANDROID_SDK/platform-

tools:$ANDROID_NDK”

6. Save the le and log out from your current session.

7. Log in again and open a terminal. Enter the following command:

$ android

8. The Android SDK and AVD Manager window shows up.

Seng Up your Environment

[ 28 ]

9. Go to the Installed packages secon and click on Update All:

10. A package selecon dialog appears. Select Accept All and then Install.

11. Aer a few minutes, all packages get downloaded and a message asking to restart

ADB service (the Android Debug Bridge) appears. Validate by clicking on Yes.

12. You can now close the applicaon.

What just happened?

We have downloaded and deployed both Android SDK and NDK and made them available

through the command line using environment variables.

We have also launched the Android SDK and AVD manager, which aims at managing the

installaon, updates, and emulaon features of the SDK components. This way, new SDK API

releases as well as third-party components (for example, Samsung Galaxy Tablet emulator,

and so on) are made available to your development environment without having to reinstall

Android SDK.

If you have trouble connecng at step 9, then you may be located behind a proxy. In this

case, Android SDK and AVD manager provide a Sengs secon where you can specify your

proxy sengs.

This is the end of the secon dedicated to the Linux setup.

The following secon is mixed.

Chapter 1

[ 29 ]

Setting up the Eclipse development environment

Command line lovers, vi fanacs, please go to the next chapter or you may feel sick! For most

humans, having a comfortable and visual-friendly IDE is essenal. And hopefully, Android

works with the greatest of all: Eclipse!

Eclipse is the only ocially supported IDE for Android SDK through the Google ocial plugin

named ADT. But ADT is only for Java. Hopefully, Eclipse supports C/C++ as well through CDT,

a general C/C++ plugin. Although not specic to Android, it works well with the NDK. The

version of Eclipse used throughout this book is Helios (3.6).

Time for action – installing Eclipse

1. Open your web browser and go to http://www.eclipse.org/downloads/.

This web page lists all available Eclipse packages: for Java, J2EE, C++.

2. Download Eclipse IDE for Java Developers.

3. Extract the downloaded Tar/GZ le (on Linux and Mac OS X) or ZIP le (on Windows)

with your archive manager.

4. Once extracted, run Eclipse by double-clicking on the eclipse executable inside its

directory. On Mac OS X, make sure to execute eclipse alias and not Eclipse.app or

else environment variables dened earlier in .profile will not be available

to Eclipse.

5. If Eclipse asks for a workspace, dene a custom workspace directory if you want

to (default workspace is ne) and click OK.

6. Aer Eclipse has started, close the Welcome Page.

7. Go to the Help | Install New Soware menu.

If a problem occurs in the next steps while accessing update sites, then check

your Internet connecon. You may be either disconnected or your computer

is behind a proxy. In the laer case, it is possible to download ADT plugin as

an archive le from the ADT web page and install it manually (or congure

Eclipse to connect through a proxy but that is another maer).

Seng Up your Environment

[ 30 ]

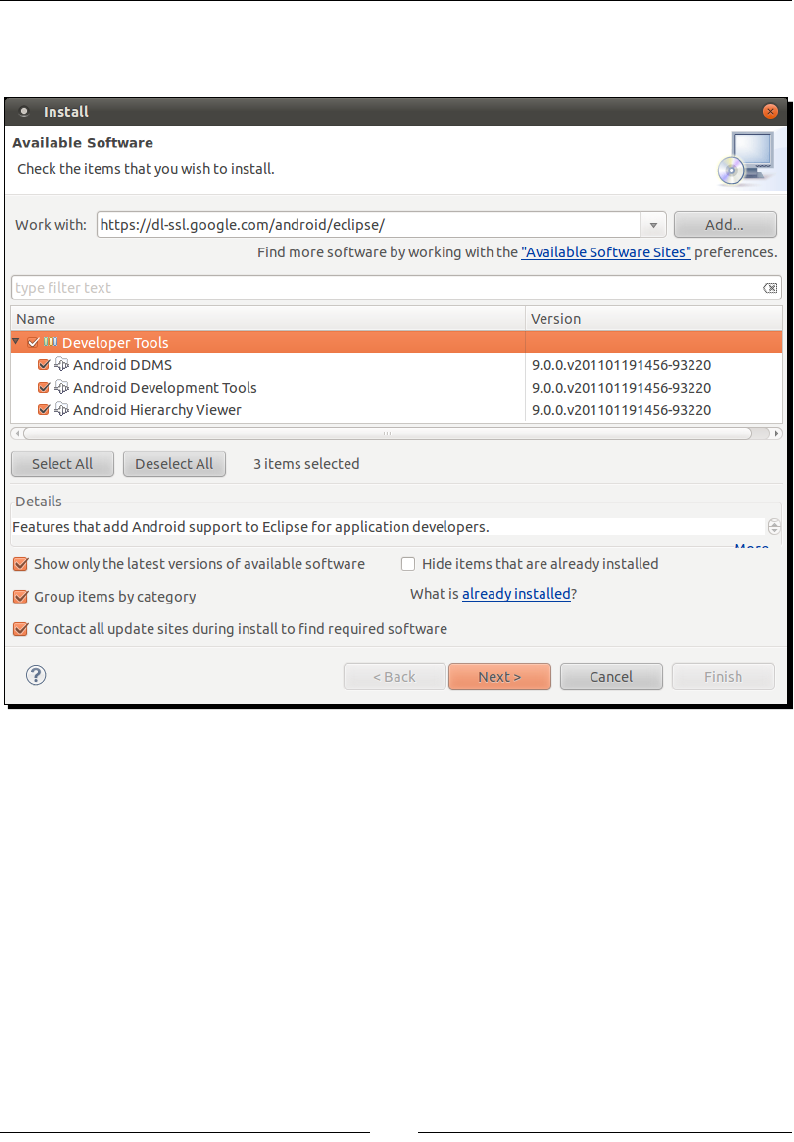

8. Enter https://dl-ssl.google.com/android/eclipse/ in the Work with

eld and validate.

9. Aer a few seconds, a Developer Tools plugin appears; select it and click on the

Next buon.

10. Follow the wizard and accept condions when asked. On the last wizard page, click

on Finish.

11. ADT gets installed. A warning may appear indicang that plugin content is unsigned.

Ignore it and click on OK.

Chapter 1

[ 31 ]

12. When nished, restart Eclipse as requested.

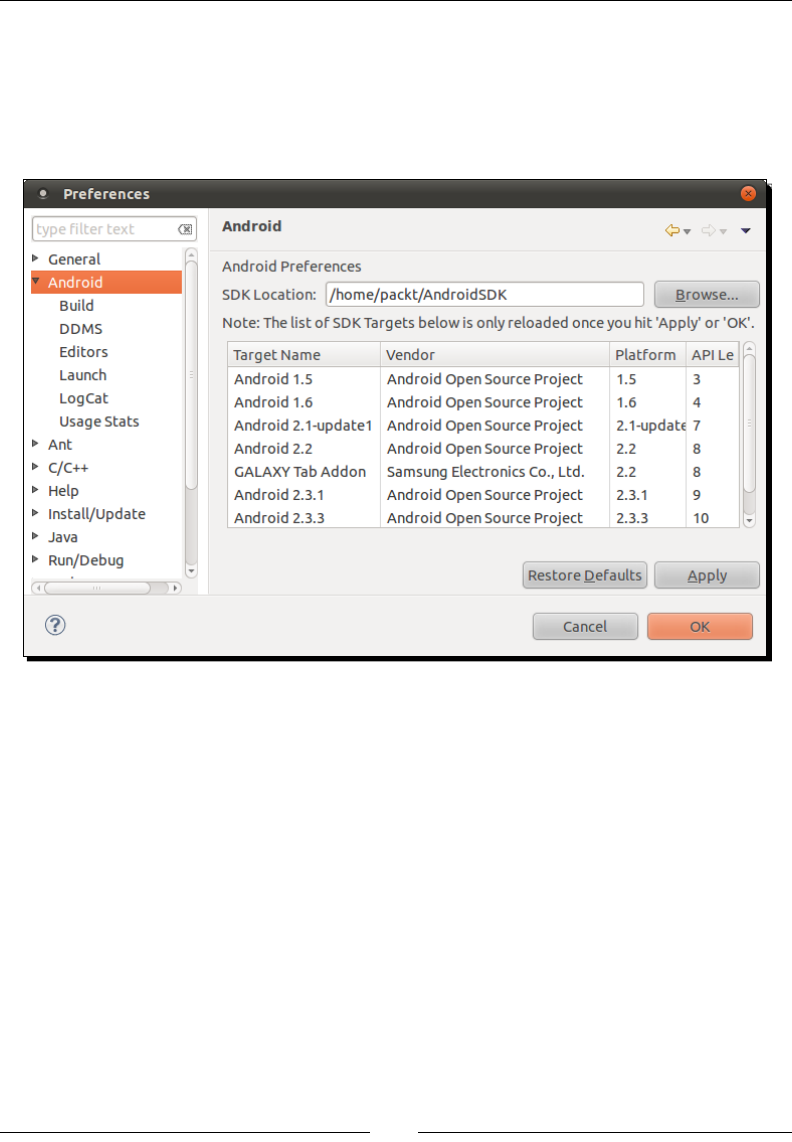

13. When Eclipse is restarted, go to menu Window | Preferences (Eclipse | Preferences

on Mac OS X) and go to the Android secon.

14. Click on Browse and select the path to your Android SDK directory.

15. Validate preferences.

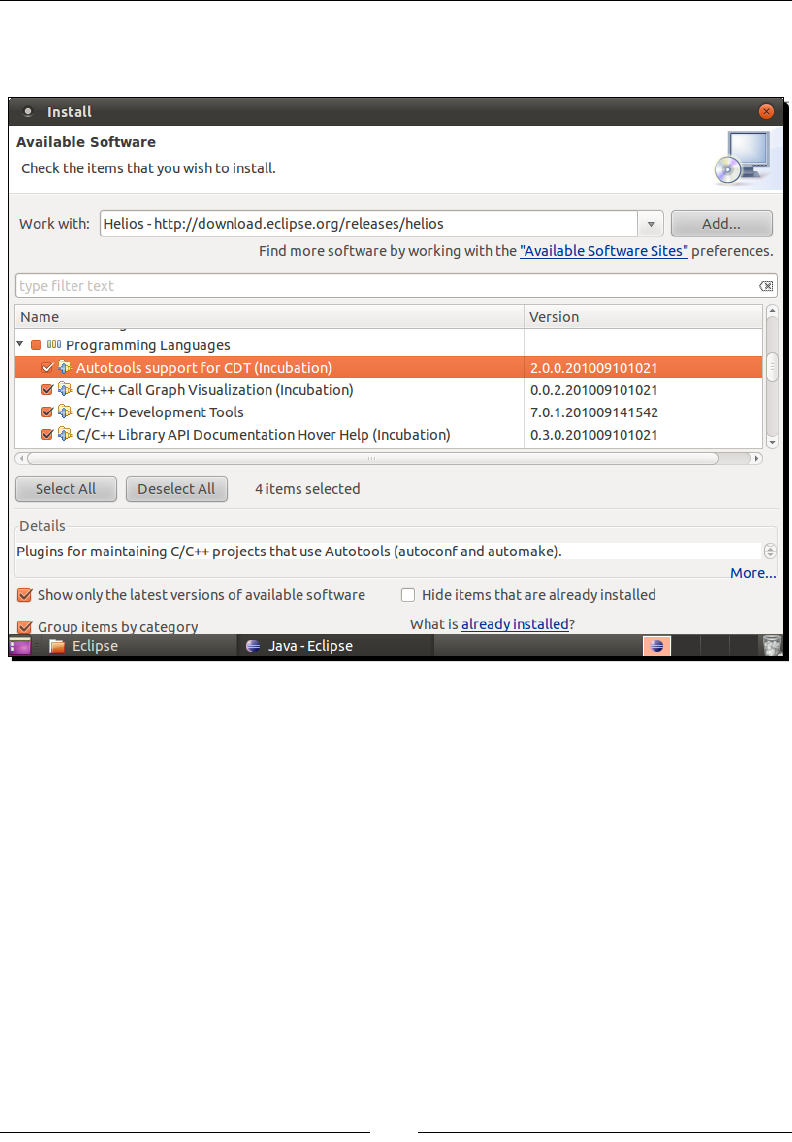

16. Go back to the Help | Install New Soware... menu.

17. Open the Work with combobox and select the item containing Eclipse version name

(here Helios).

18. Find Programming Languages in the plugin tree and open it.

Seng Up your Environment

[ 32 ]

19. Select CDT plugins. Incubaon plugins are not essenal. C/C++ Call Graph

Visualizaon is for Linux only and cannot be installed on Windows or Mac OS X:

20. Follow the wizard and accept condions when asked. On the last wizard page,

click on Finish.

21. When nished, restart Eclipse.

What just happened?

Eclipse is now installed and ocial Android development plugin ADT and C/C++ plugin CDT

are installed. ADT refers to the Android SDK locaon.

The main purpose of ADT is to ease integraon of Eclipse with SDK development tools. It

is perfectly possible to develop in Android without an IDE using command line only. But

automac compilaon, packaging, deployment, and debugging are addicve features, which

are hard to get rid of!

Chapter 1

[ 33 ]

You may have noced that no reference to the Android NDK is given to ADT. This is because

ADT works for Java only. Hopefully, Eclipse is exible enough to handle hybrid Java/C++

projects! We will talk about that further when creang our rst Eclipse project.

In the same way, CDT allows easy integraon of C/C++ compilaon features into Eclipse.

We also “silently” installed JDT, the Java plugin for Eclipse. It is embedded in the Eclipse IDE

for Java Developers package. An Eclipse package including only CDT is also available on the

Eclipse Website.

More on ADT

ADT update site given to Eclipse in step 8 comes from the ocial ADT

documentaon that you can nd at http://developer.android.

com/sdk/eclipse-adt.html. This page is the main informaon point

to visit if new versions of Eclipse or Android are released.

Emulating Android

Android SDK provides an emulator to help developers who do not have a device (or are

impaently waing for a new one!) get started quickly. Let’s now see how to set it up.

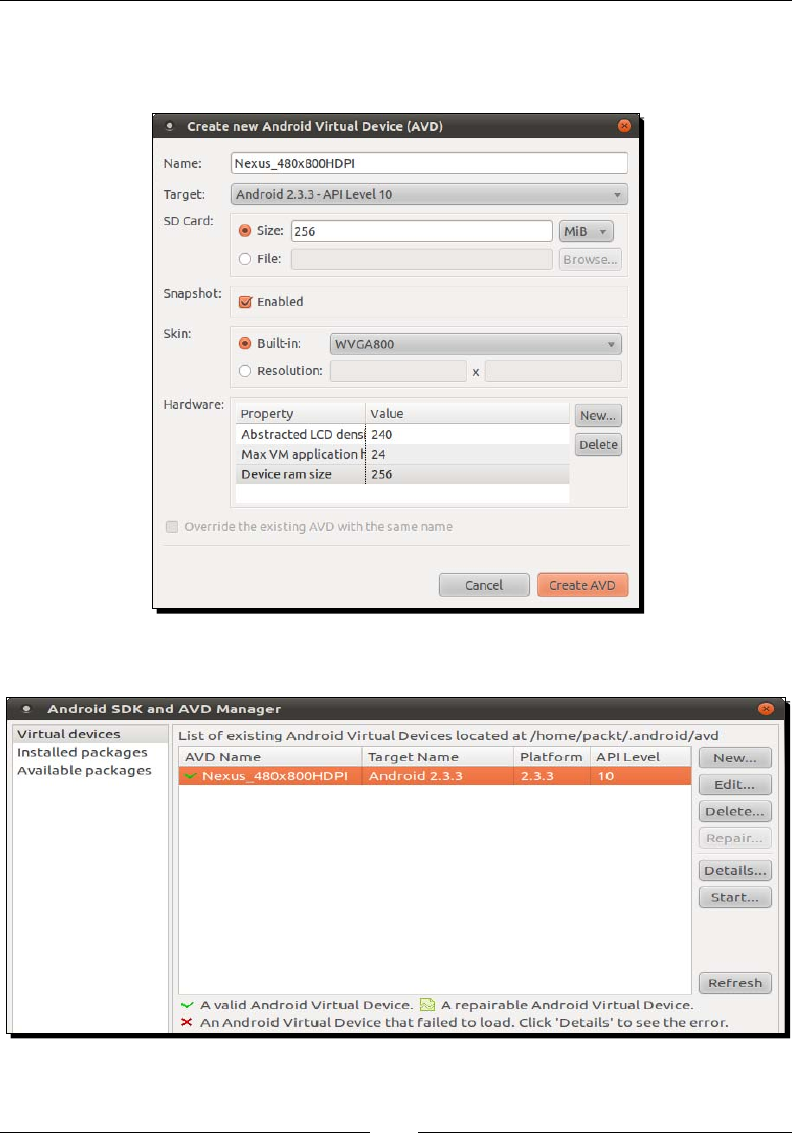

Time for action – creating an Android virtual device

1. Open Android SDK and AVD Manager using either the command line (key in

android) or the Eclipse toolbar buon:

2. Click on the New buon.

3. Give a name to this new emulated device: Nexus_480x800HDPI.

4. Target plaorm is Android 2.3.3.

5. Specify SD card size: 256.

6. Enable snapshot.

7. Set Built-in resoluon WVGA800.

Seng Up your Environment

[ 34 ]

8. Leave the Hardware secon the way it is.

9. Click on Create AVD.

10. The newly created virtual device now appears in the list:

Chapter 1

[ 35 ]

11. Let’s check how it works: click on the Start buon.

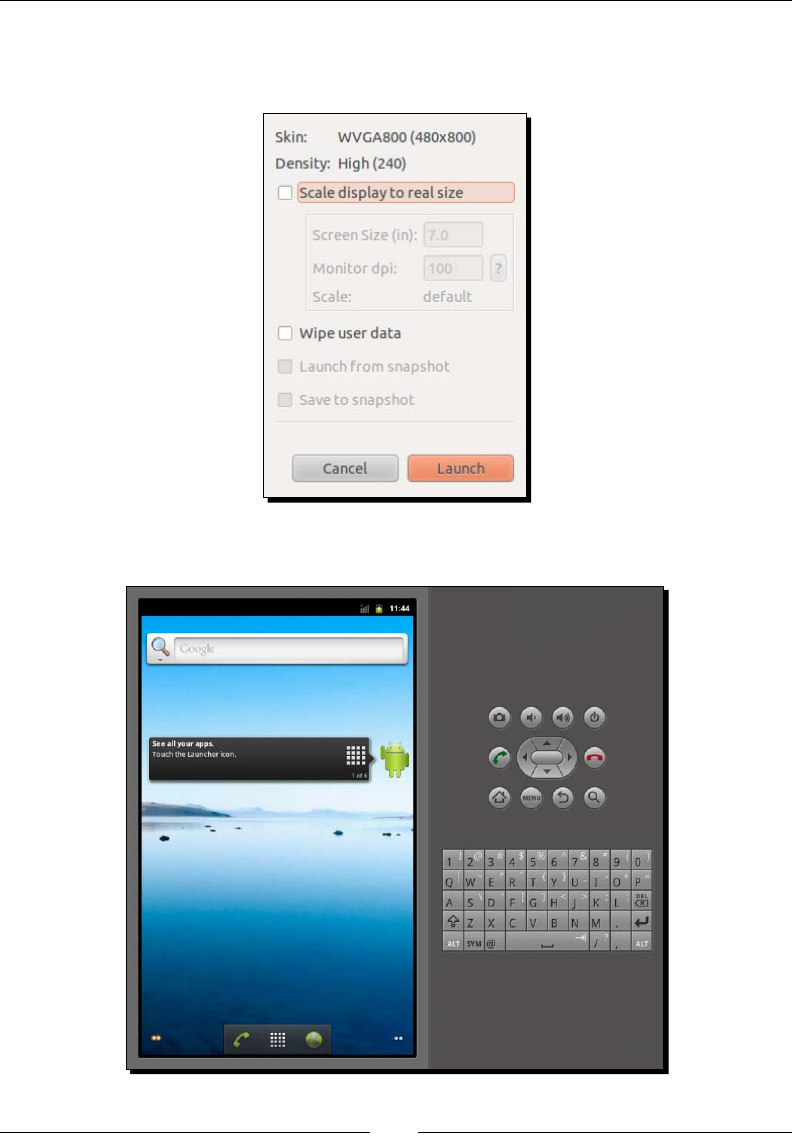

12. Click on the Launch buon:

13. The emulator starts up and aer a few minutes, your device is loaded:

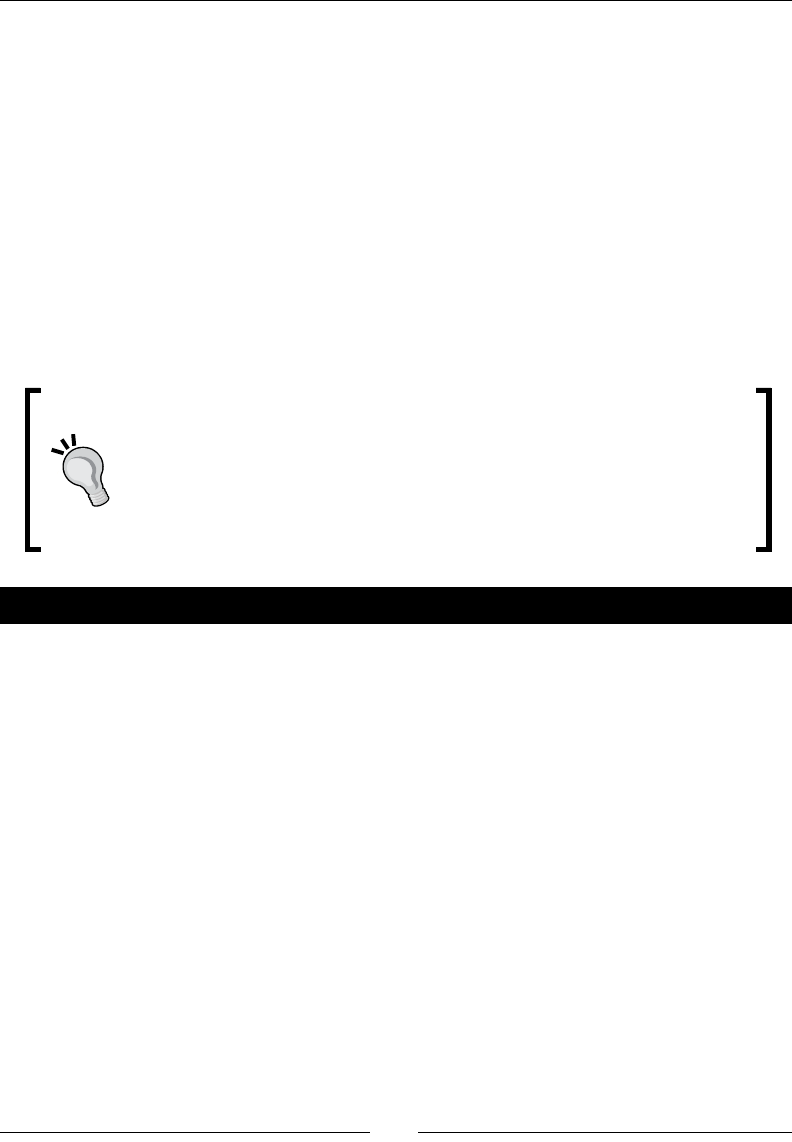

Seng Up your Environment

[ 36 ]

What just happened?

We have created our Android Virtual Devices which emulate a Nexus One with an HDPI

(High Density) screen of size 3.7 inches and a resoluon of 480x800 pixels. So we are now

able to test applicaons we are going develop in a representave environment. Even beer,

we are now able to test them in several condions and resoluons (also called skins)

without requiring a costly device.

Although this is out of the scope of this book, customizing addional opons, such as the

presence of a GPS, camera, and so on, is also possible when creang an AVD to test an

applicaon in limited hardware condions. And as a nal note, screen orientaon can be

switched with Ctrl + F11 and Ctrl + F12. Check out the Android website for more informaon

on how to use and congure the emulator (http://developer.android.com/guide/

developing/devices/emulator.html).

Emulaon is not simulaon

Although emulaon is a great tool when developing, there are a few

important points to take into account: emulaon is slow, not always perfectly

representave, and some features such as GPS support may be lacking.

Moreover, and this is probably the biggest drawback: Open GL ES is only

parally supported. More specically, only Open GL ES 1 currently works on

the emulator.

Have a go hero

Now that you know how to install and update Android plaorm components and create an

emulator, try to create an emulator for Android Honeycomb Tablets. Using the Android SDK

and AVD Manager, you will need to do the following:

Install Honeycomb SDK components

Create a new AVD which targets Honeycomb plaorm

Start the emulator and use proper screen scaling to match real tablet scale

Depending on your computer resoluon, you may need to tweak AVD display scale. This

can be done by checking Scale display to real size when starng the emulator and entering

your monitor density (use the ? buon to calculate it). If you perform well, you should obtain

the new Honeycomb interface at its real scale (no worries, it is also in Landscape mode on

my computer):

Chapter 1

[ 37 ]

The following secon is dedicated to Windows and Mac OS

X. If you are a Linux user, you can immediately jump to the

Developing with an Android device on Linux secon.

Developing with an Android device on Windows and

Mac OS X

Emulators can be of really good help, but nothing compared to a real device. Hopefully,

Android provides the sucient connecvity to develop on a real device and make the tesng

cycle more ecient. So take your Android in hand, switch it on and let’s try to connect it to

Windows or Mac OS X.

Time for action – setting up your Android device on

Windows and Mac OS X

Installaon of a device for development on Windows is manufacturer-specic. More

informaon can be found at http://developer.android.com/sdk/oem-usb.html

with a full list of device manufacturers. If you have got a driver CD with your Android device,

you can use it. Note that the Android SDK also contains some Windows drivers under

$ANDROID_SDK\extras\google\usb_driver. Specic instrucons are available for

Google development phones, Nexus One, and Nexus S at http://developer.android.

com/sdk/win-usb.html.

Seng Up your Environment

[ 38 ]

Mac users should also refer to their Manufacturer’s instrucons. However, as Mac’s ease of

use is not only a legend, simply connecng an Android device to a Mac should be enough to

get it working! Your device should be recognized immediately without installing anything.

Once the driver (if applicable) is installed on the system, do the following:

1. Go to the home menu, then go to Sengs | Applicaon | Development on your

mobile device (may change depending on your manufacturer).

2. Enable USB debugging and Stay awake.

3. Plug your device into your computer using a data connecon cable (beware some

cables are alimentaon cables only and will not work!). Depending on your device,

it may appear as a USB disk.

4. Launch Eclipse.

5. Open the DDMS perspecve. If working properly, your phone should be listed in the

Devices view:

6. Say cheese and take a screen capture of your own phone by clicking the

corresponding toolbar buon:

Now you are sure your phone is correctly connected!

What just happened?

We have connected an Android device to a computer in development mode and enabled

the Stay awake opon to stop automac screen shutdown when the phone is charging.

If your device is sll not working, go to the Trouble shoong a device connecon secon.

Chapter 1

[ 39 ]

The device and the computer communicate through an intermediate background service: the

Android Debug Bridge (ADB) (more about it in the next chapter). ADB starts automacally the

rst me it is called, when Eclipse ADT is launched or when invoked from the command line.

This is the end of the secon dedicated to Windows and Mac OS X.

If you are not a Linux user, you can jump to the Trouble shoong a

device connecon or the Summary secon.

Developing with an Android device on Linux

Emulators can be of really good help, but it is nothing compared to a real device.

Hopefully, Android provides the sucient connecvity to develop on a real device and

make the tesng cycle more ecient. So take your Android in hand, switch it on and let’s

try to connect it to Linux.

Time for action – setting up your Android device on Ubuntu

1. Go to Home | Menu | Sengs | Applicaon | Development on your mobile device

(may change depending on your manufacturer).

2. Enable USB debugging and Stay awake.

3. Plugin your device to your computer using a data connecon cable (beware, some

cables are alimentaon cables only and will not work!). Depending on your device, it

may appear as a USB disk.

4. Try to run ADB and list devices. If you are lucky, your device works out of the box

and the list of devices appears. In that case, you can ignore the following steps:

$ adb devices

Seng Up your Environment

[ 40 ]

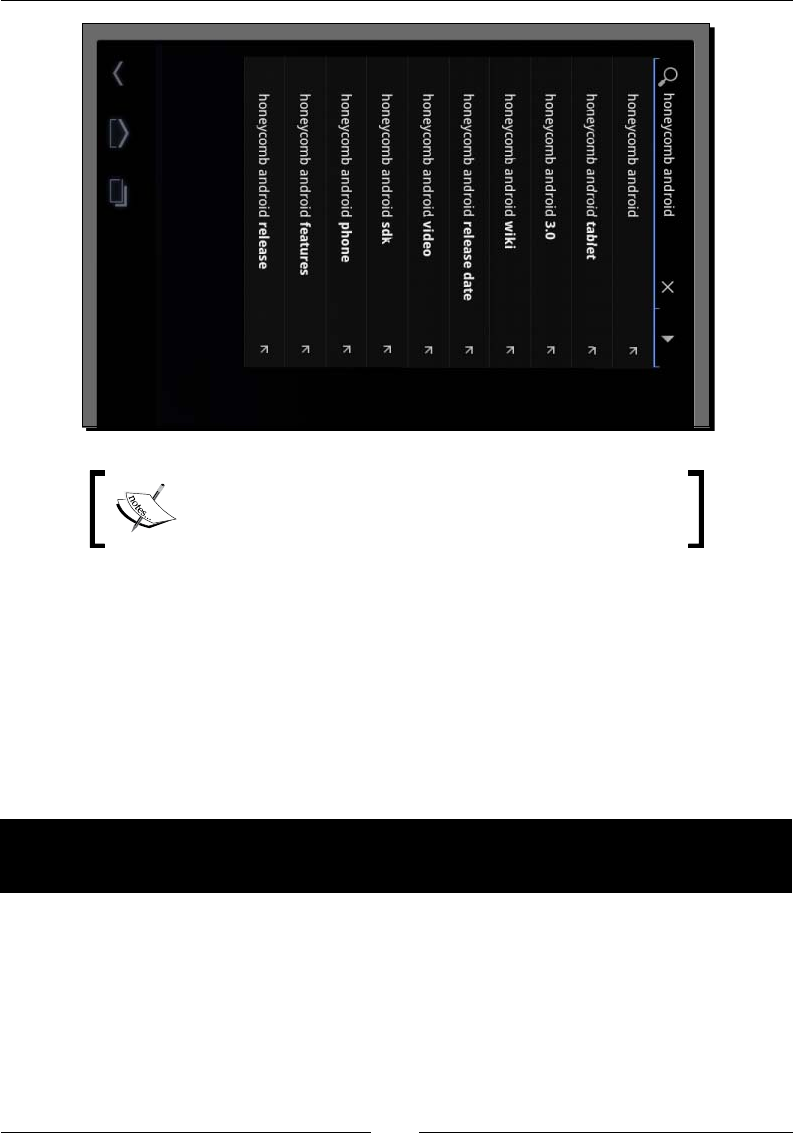

5. If ????????? appears instead of your device name (which is likely), then ADB does

not have proper access rights. We need to nd your Vendor ID and Product ID.

Because Vendor ID is a xed value for each manufacturer, you can nd it in the

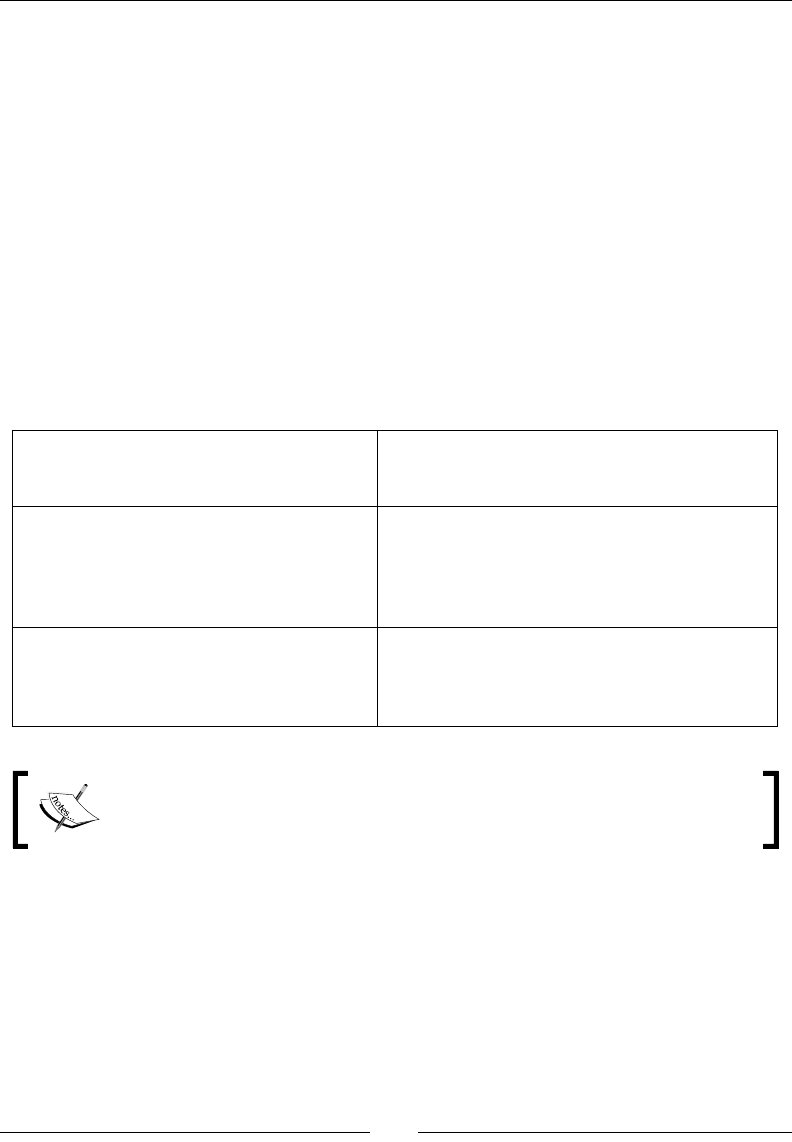

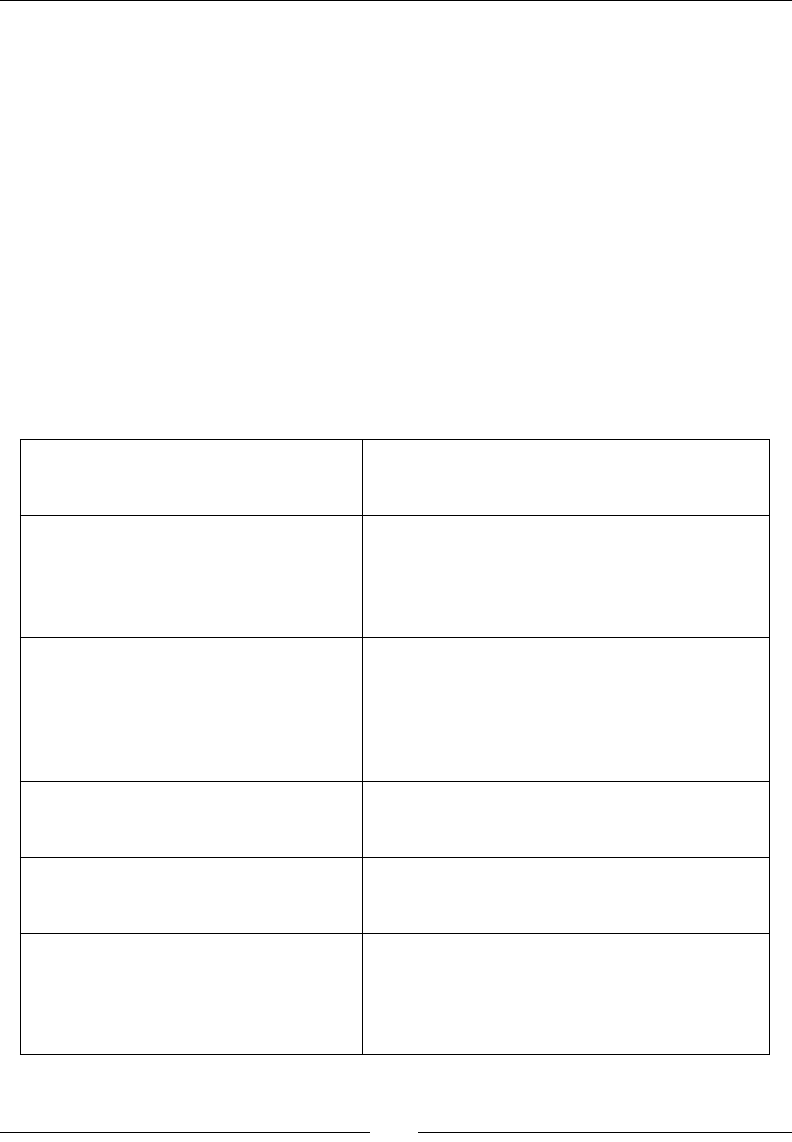

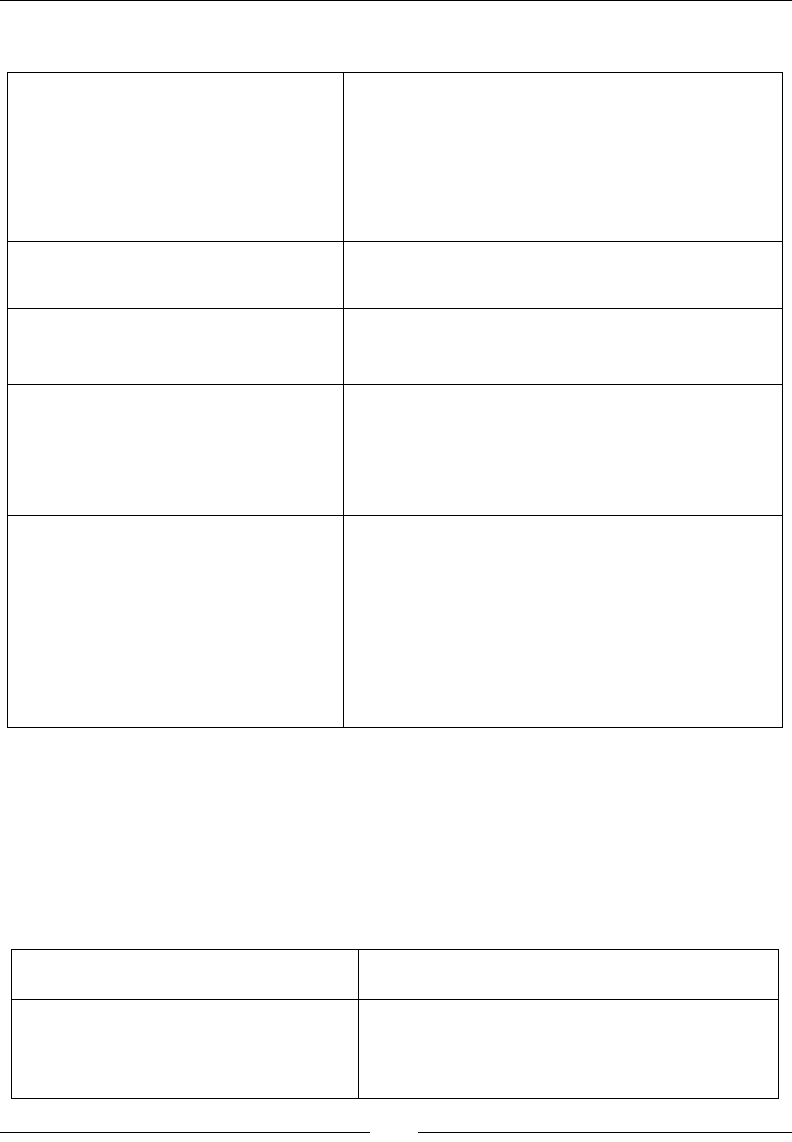

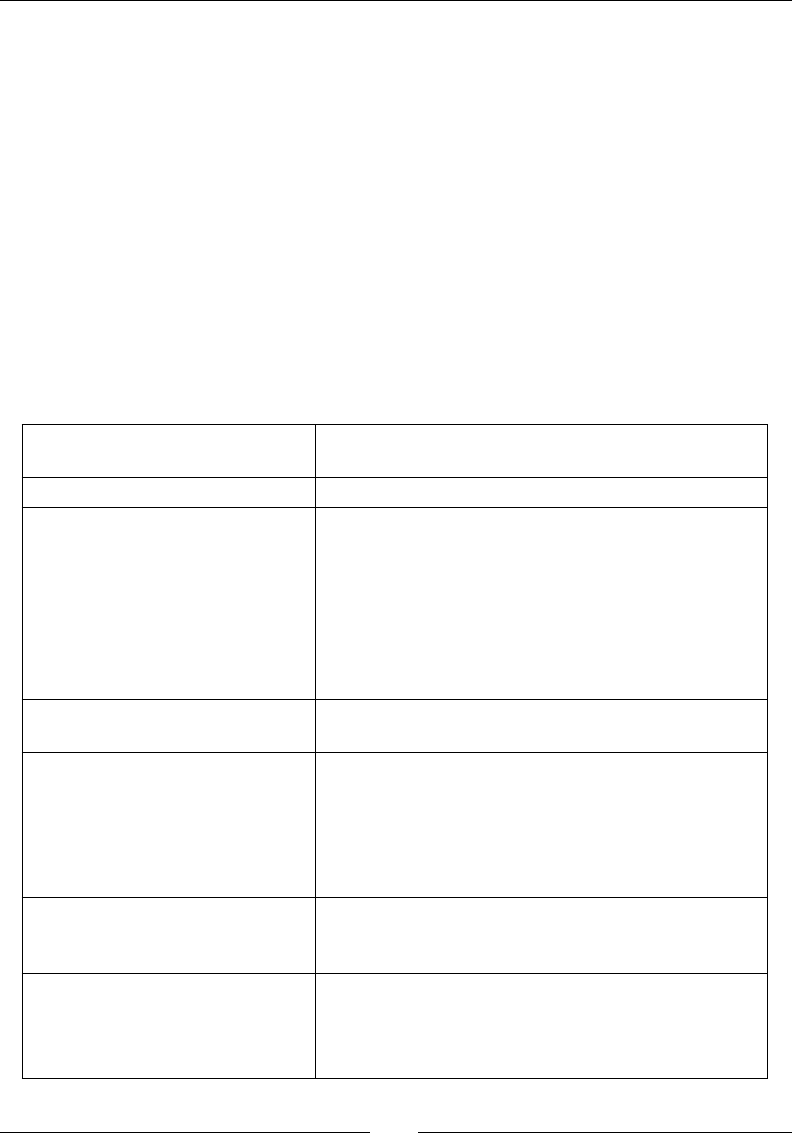

following list:

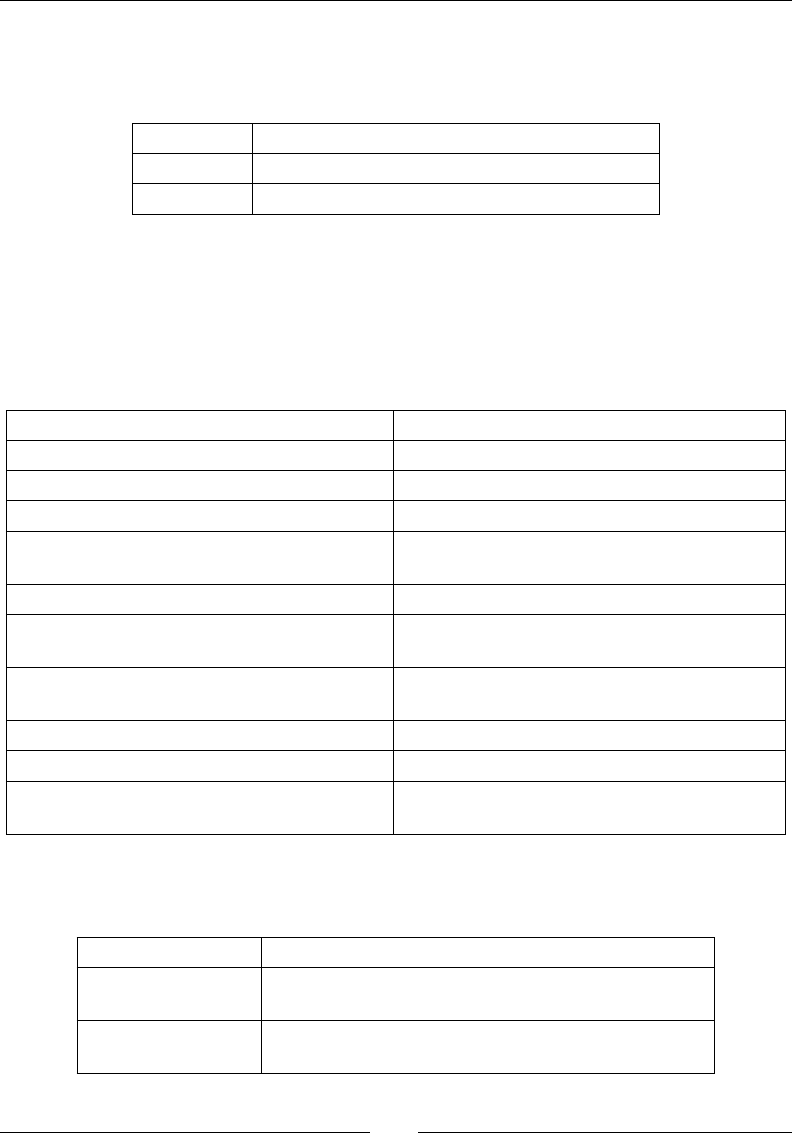

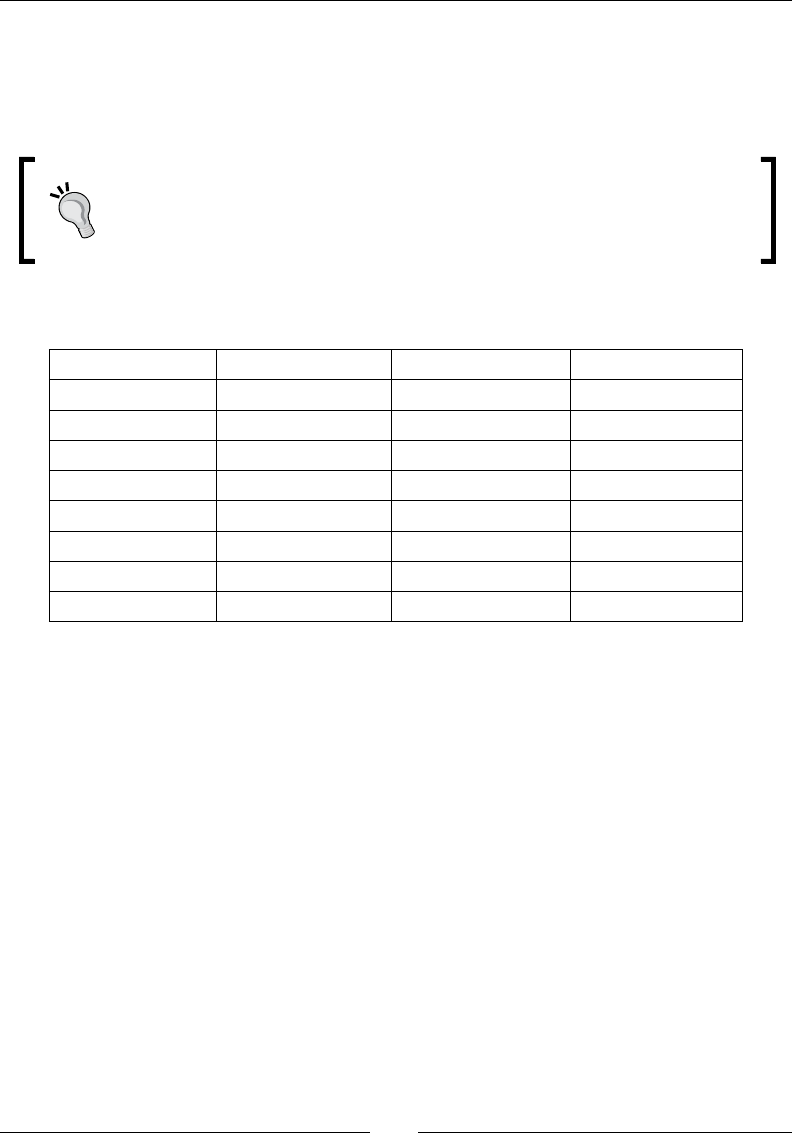

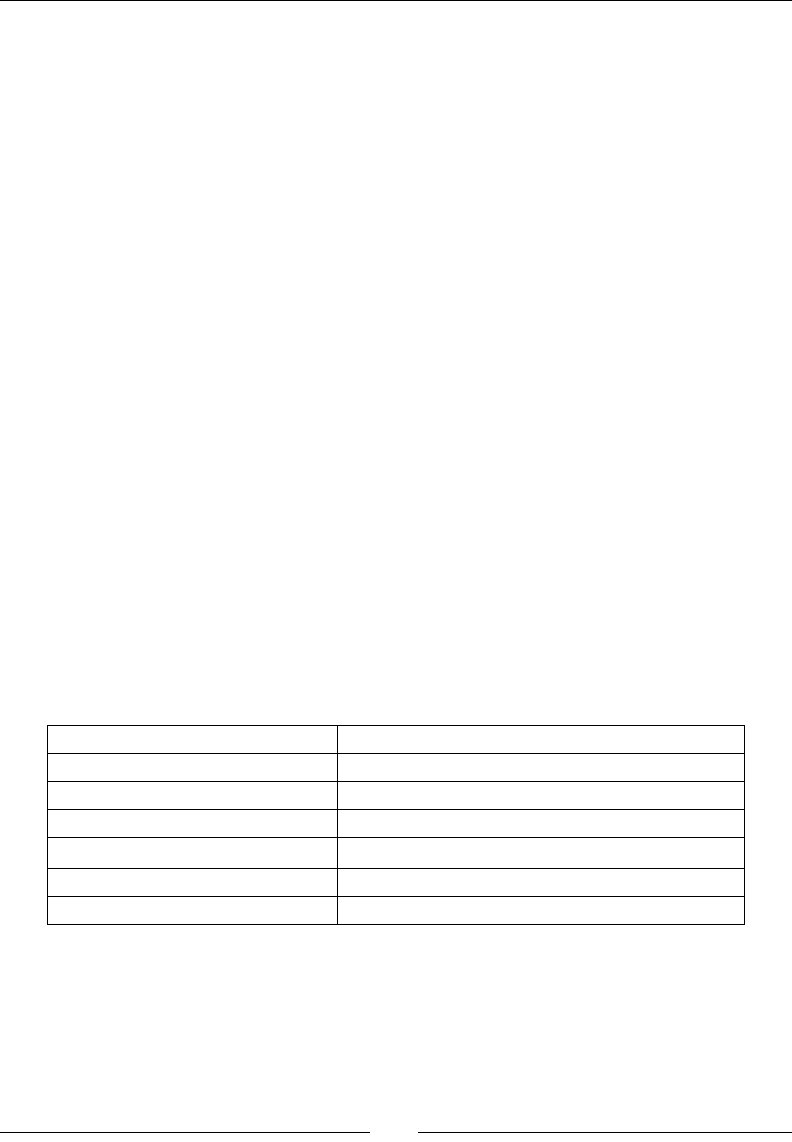

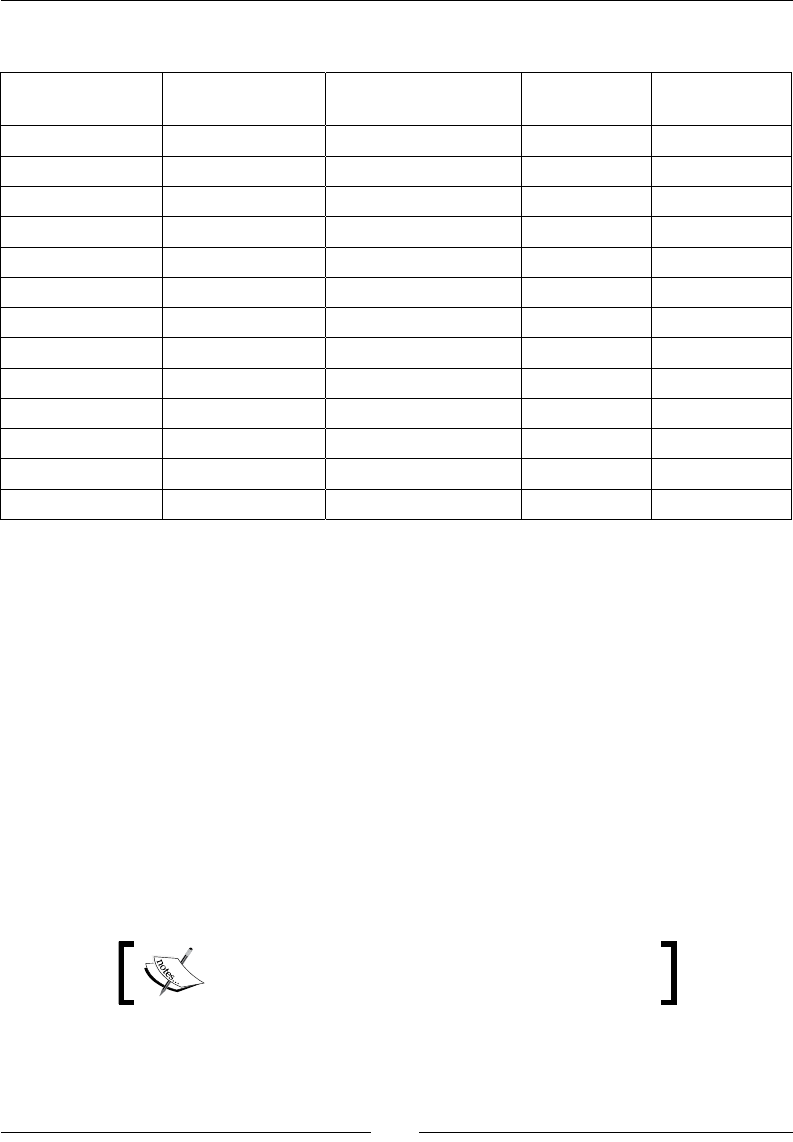

Manufacturer USB Vendor ID

Acer 0502

Dell 413c

Foxconn 0489

Garmin-Asus 091E

HTC 0bb4

Huawei 12d1

Kyocera 0482

LG 1004

Motorola 22b8

Nvidia 0955

Pantech 10A9

Samsung 04e8

Sharp 04dd

Sony Ericsson 0fce

ZTE 19D2

The current list of Vendor IDs can be found on the Android website at http://

developer.android.com/guide/developing/device.html#VendorIds.

6. The device Product ID can be found using the lsusb command “greped” with Vendor

ID to nd it more easily. In the following example, the value 0bb4 is the HTC Vendor

ID and 0c87 is the HTC Desire product ID:

$ lsusb | grep 0bb4

Chapter 1

[ 41 ]

7. With the root user, create a le /etc/udev/rules.d/52-android.rules with

your Vendor and Product ID:

$ sudo sh -c ‘echo SUBSYSTEM==\”usb\”, SYSFS{idVendor}==\”<Your

Vendor ID>\”, ATTRS{idProduct}=\”<Your Product ID>\”,

MODE=\”0666\” > /etc/udev/rules.d/52-android.rules’

8. Change le rights to 644:

$ sudo chmod 644 /etc/udev/rules.d/52-android.rules

9. Restart the udev service (the Linux device manager):

$ sudo service udev restart

10. Relaunch the ADB server in the root mode this me:

$ sudo $ANDROID_SDK/tools/adb kill-server

$ sudo $ANDROID_SDK/tools/adb start-server

11. Check whether your device works by lisng the devices again. If ????????? appears,

or worse, nothing appears, then something went wrong in the previous steps:

$ adb devices

What just happened?

We have connected an Android device to a computer in development mode and enabled the

Stay awake opon to stop automac screen shutdown when the phone is charging. If your

device is sll not working, go to the Trouble shoong a device connecon secon.

We have also started the Android Debug Bridge (ADB), which is a background service used as

a mediator for computer/device communicaon (more about it in the next chapter). ADB is

started automacally the rst me it is called, when Eclipse ADT is launched or when invoked

from the command line.

And more important than anything, we have discovered that HTC means High Tech

Computer! Jokes apart, the connecon process can become tricky on Linux. If you belong to

the unlucky group of people who need to launch ADB as the root, you are highly advised to

create a startup script similar to the following one, to launch ADB. You can use it from the

command line or add it to your main menu (Menu | Preferences| Main Menu on Ubuntu):

#!bin/sh

stop_command=”$ANDROID_SDK/platform-tools/adb kill-server”

launch_command=”$ANDROID_SDK/platform-tools/adb start-server”

/usr/bin/gksudo “/bin/bash –c ‘$stop_command; $launch_command’” |

zenity –text-info –title Logs

Seng Up your Environment

[ 42 ]

This script displays daemon startup message in a Zenity window (a Shell toolkit to display

graphical windows using GTK+).

At step 6, if 52-android.rules does not work, then try 50-android.rules or

51-android.rules (or all of them). Although udev (the Linux device manager)

should only use the prex number to order rule les lexicographically, that

somemes seems to do the trick. The magic of Linux!

This is the end of the secon dedicated to Linux setup. The following secon

is mixed.

Troubleshooting a development device

Having trouble connecng an Android development device to a computer can mean any of

the following:

Your host system is not properly set up

Your development device is not working properly

The ADB service is malfunconing

If the problem comes from your host system, check your device manufacturer instrucons

carefully to make sure any needed driver is correctly installed. Check the Hardware

properes to see if it is recognized and turn on the USB storage mode (if applicable) to see

if it is working properly. Indeed, aer geng connected, your device may be visible in your

hardware sengs but not as a disk. A device can be congured as a Disk drive (if a SD-card

or similar is included) or in charge-only mode. This is absolutely ne as the development

mode works perfectly in the charge-only mode.

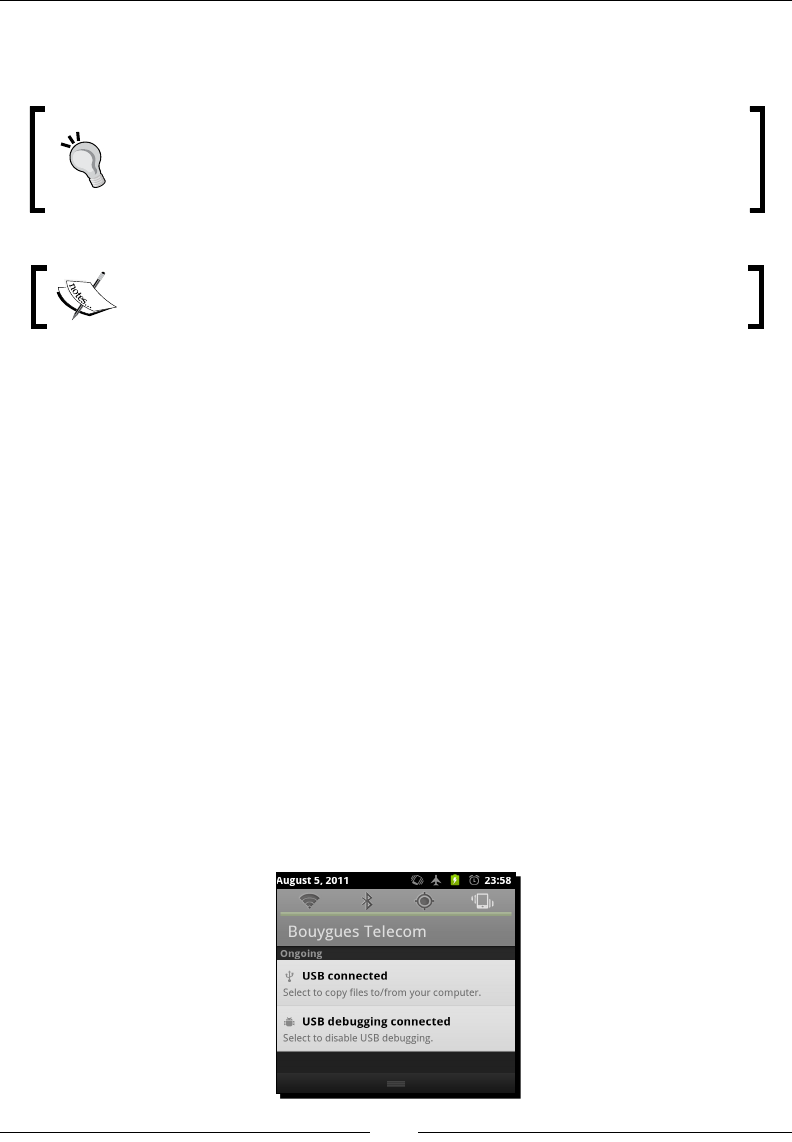

Disk-drive mode is generally acvated from the Android task bar (USB connected item).

Refer to your device documentaon for the specicies of your device.

Chapter 1

[ 43 ]

SD Card access

When the charge-only mode is acvated, SD card les and directories are

visible to the Android applicaons installed on your phone but not to your

computer. On the opposite side, when Disk drive mode is acvated, those

are visible only from your computer. Check your connecon mode when your

applicaon cannot access its resource les on a SD Card.

If problem comes from your Android device, a possible soluon is to deacvate and

reacvate the Debug mode on your device. This opon can be switched from the Home |

Menu | Sengs | Applicaon | Development screen on your mobile device (which may

change depending on your manufacturer) or accessed more quickly from the Android task

bar (USB debugging connected item). As a last measure, reboot your device.

Problem may also come from the ADB. In that case, check whether the ADB is working by

issuing the following command from a terminal prompt:

$ adb devices

If your device is correctly listed, then ADB is working. This command will launch ADB service

if it was not already. You can also restart it with commands:

$ adb kill-server

$ adb start-server

In any case, to solve a specic connecon problem or get up-to-date informaon, visit the

following web page: http://developer.android.com/guide/developing/device.

html. As a feedback from experience, never neglect hardware. Always check with a second

cable or device if you have one at your disposal. I once purchased a bad quality cable, which

performed badly when some contorons occurred...

Summary

Seng up our Android development plaorm is a bit tedious but is hopefully performed

once and for all! We have installed the necessary ulies using the package system on Linux,

Developer Tools on Mac OS X, and Cygwin on Windows. Then we have deployed the Java and

Android development kits and checked if they are working properly. Finally, we have seen how

to create a phone emulator and connect a real phone for test purposes.

We now have the necessary tools in our hands to shape our mobile ideas. In the next chapter,

we are going to handle them to create, compile, and deploy our rst Android projects!

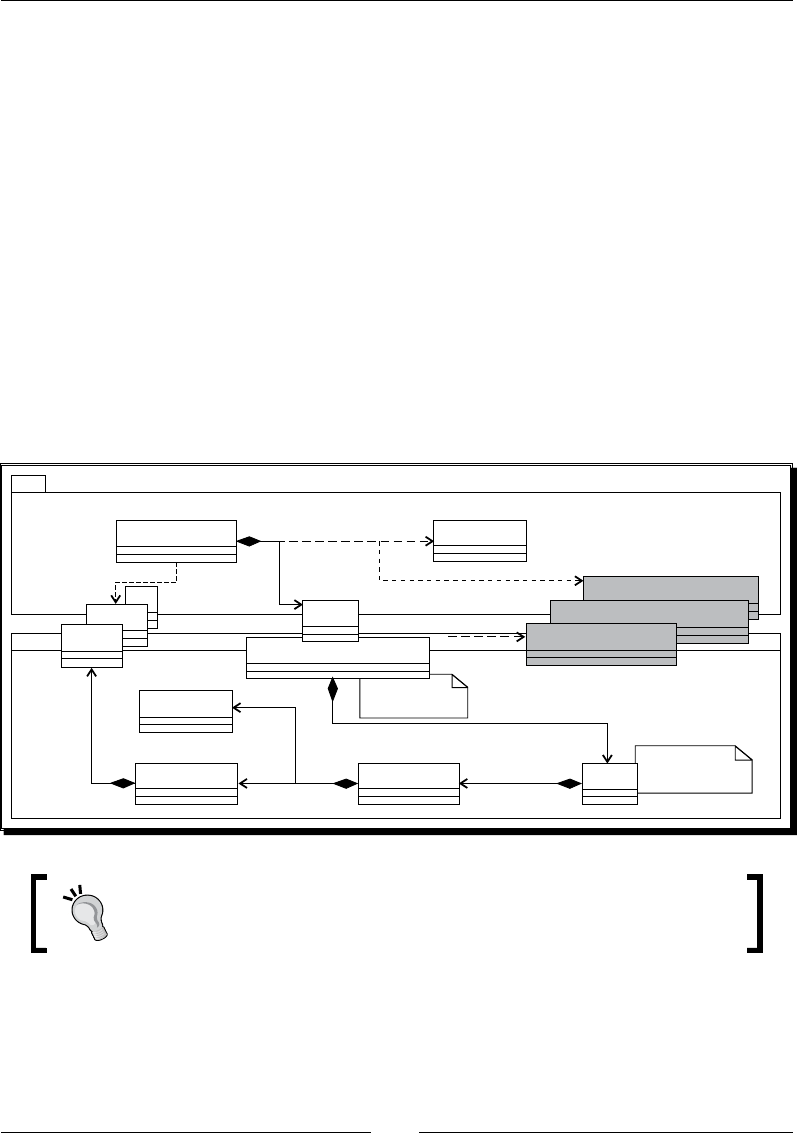

2

Creating, Compiling, and

Deploying Native Projects

A man with the most powerful tools in hand is unarmed without the knowledge

of their usage. Eclipse, GCC, Ant, Bash, Shell, Linux—any new Android

programmer needs to deal with this technologic ecosystem. Depending on your

background, some of these names may sound familiar to your ears. Indeed,

that is a real strength; Android is based on open source bricks which have

matured for years. Theses bricks are cemented by the Android Development

Kits (SDK and NDK) and their set of new tools: Android Debug Bridge (ADB),

Android Asset Packaging Tool (AAPT), Acvity Manager (AM), ndk-build, and so

on. So, since our development environment is set up, we can now get our hands

dirty and start manipulang all these ulies to create, compile, and deploy

projects which include nave code.

In this second chapter, we are going to do the following:

Compile and deploy ocial sample applicaons from the Android NDK

with Ant build tool and nave code compiler ndk-build

Learn in more detail about ADB, the Android Debug Bridge, to control

a development device

Discover addional tools like AM to manage acvies and AAPT to

package applicaons

Create our rst own hybrid mul-language project using Eclipse

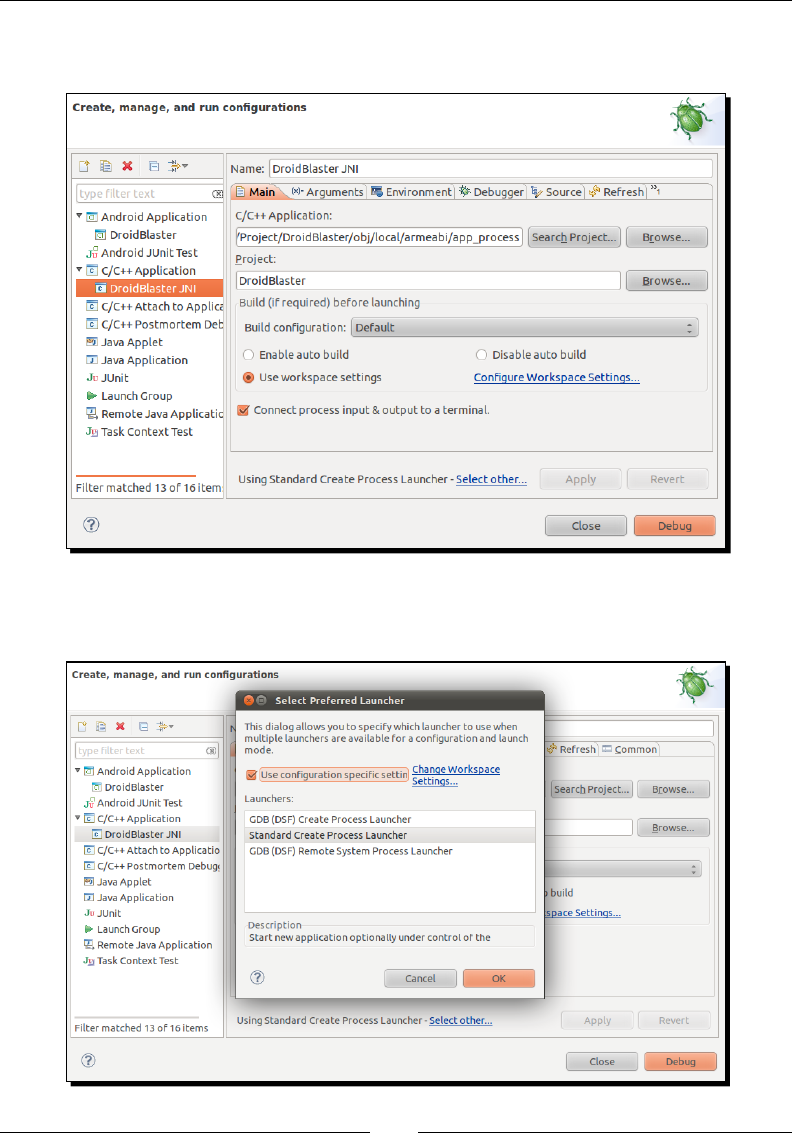

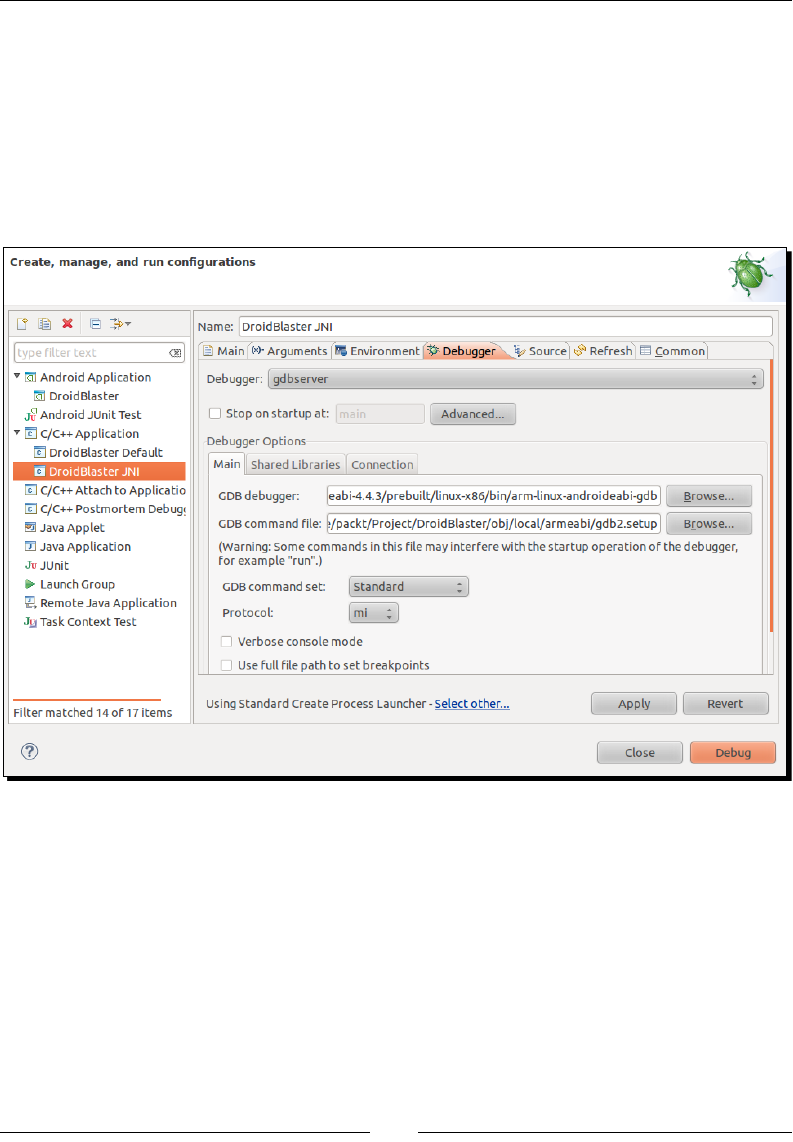

Interface Java to C/C++ through Java Nave Interfaces (in short JNI)

By the end of this chapter, you should know how to start up a new Android nave

project on your own.

Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >

Creang, Compiling, and Deploying Nave Projects

[ 46 ]

Compiling and deploying NDK sample applications

I guess you cannot wait anymore to test your new development environment. So why

not compile and deploy elementary samples provided by the Android NDK rst to see it

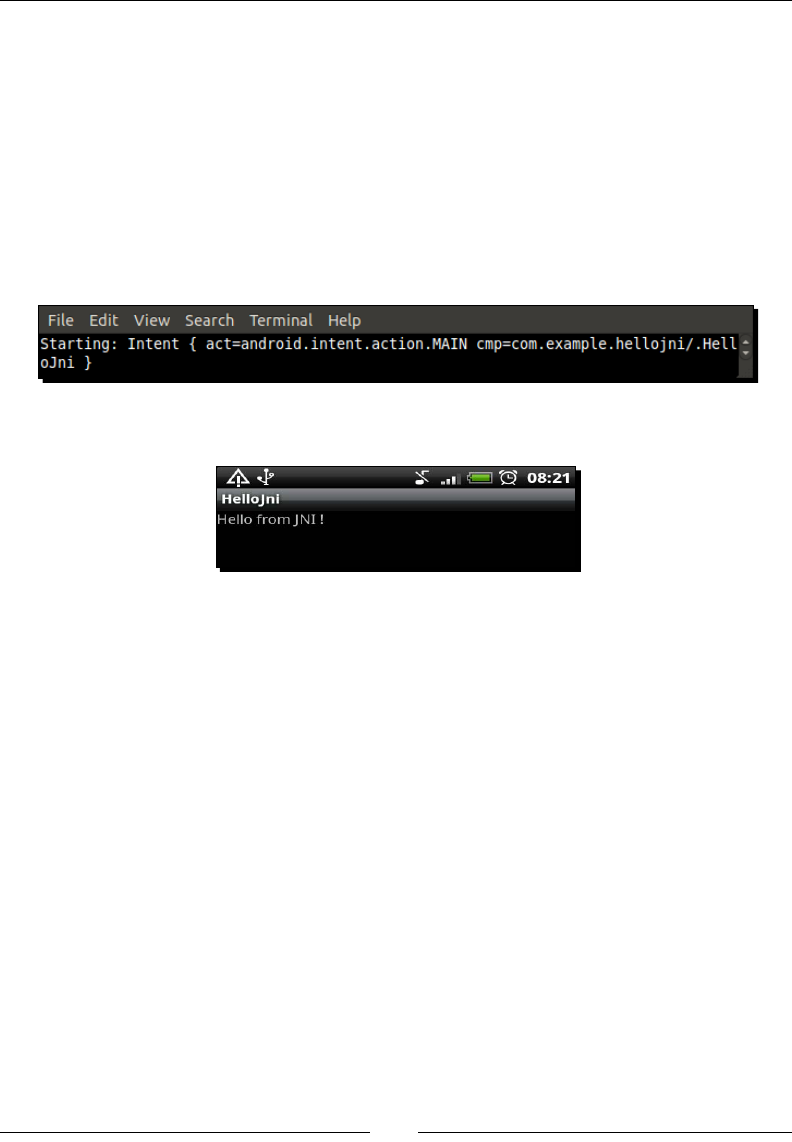

in acon? To get started, I propose to run HelloJni, a sample applicaon which retrieves a

character string dened inside a nave C library into a Java acvity (an acvity in Android

being more or less equivalent to an applicaon screen).

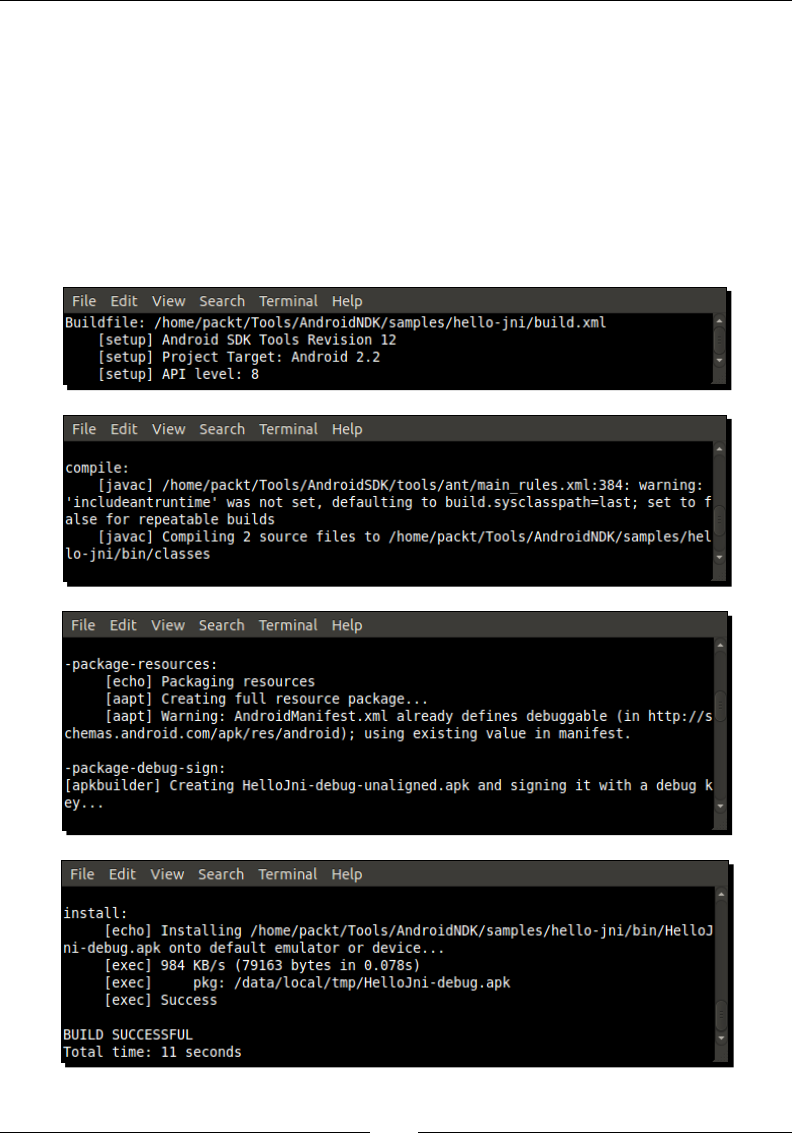

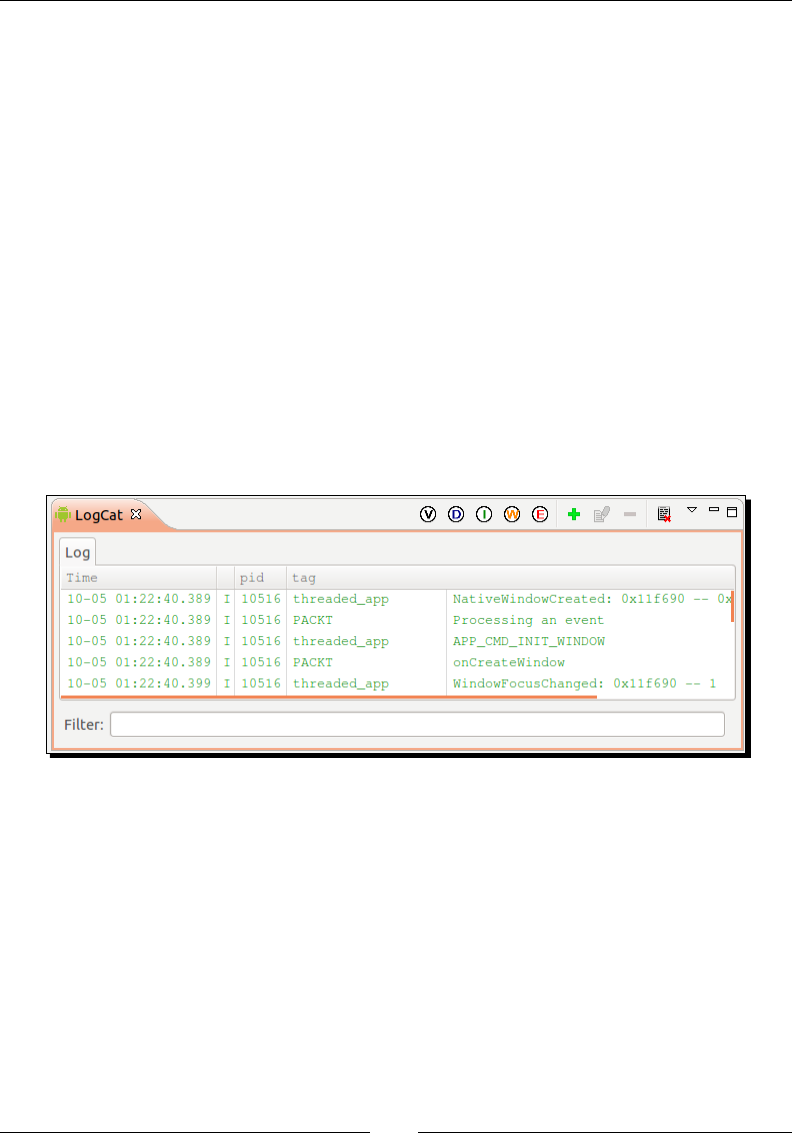

Time for action – compiling and deploying the hellojni sample

Let's compile and deploy the HelloJni project from command line using Ant:

1. Open a command-line prompt (or Cygwin prompt on Windows)

2. Go to hello-jni sample directory inside the Android NDK. All the following steps

have to performed from this directory:

$ cd $ANDROID_NDK/samples/hello-jni

3. Create Ant build le and all related conguraon les automacally using android

command (android.bat on Windows). These les describe how to compile and

package an Android applicaon:

android update project –p .

4. Build libhello-jni nave library with ndk-build, which is a wrapper Bash

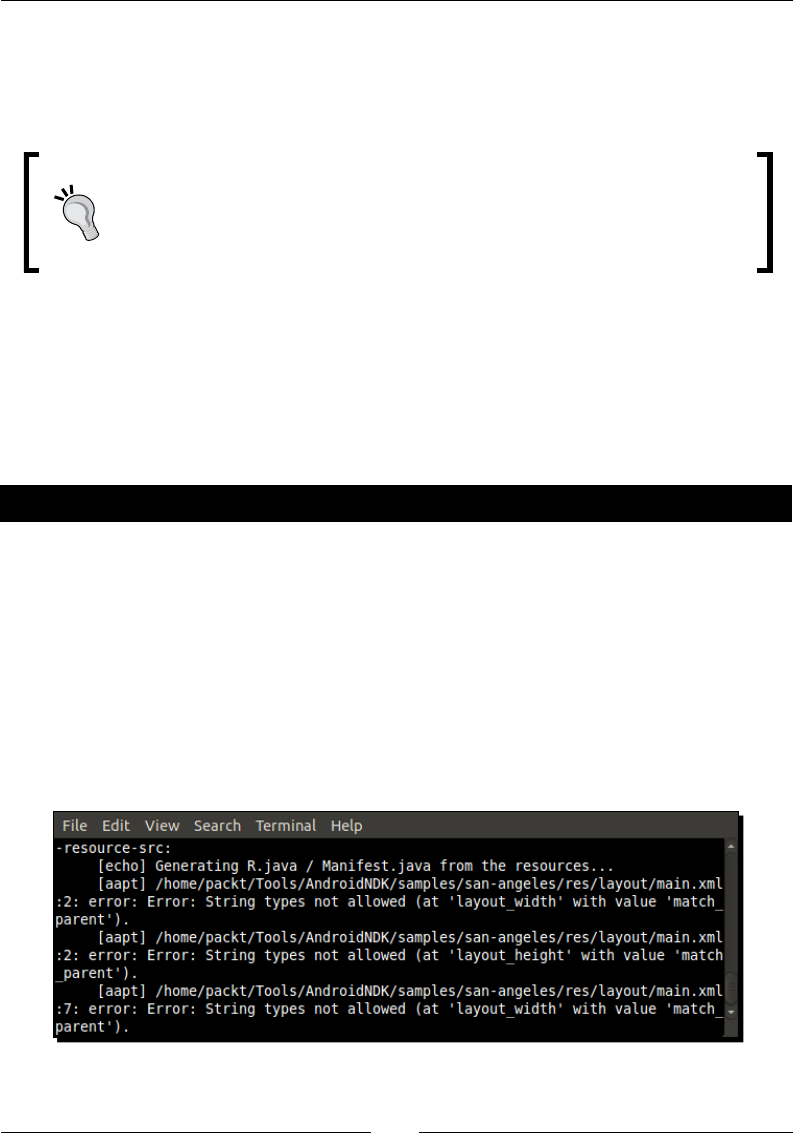

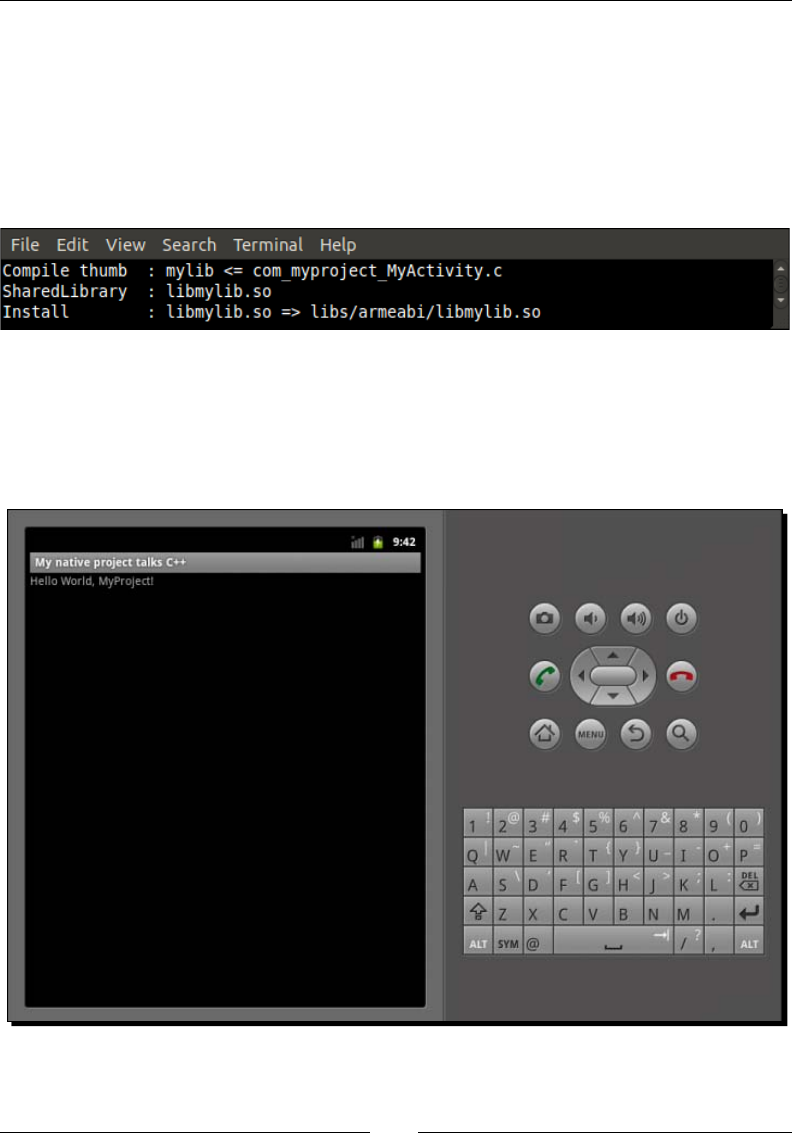

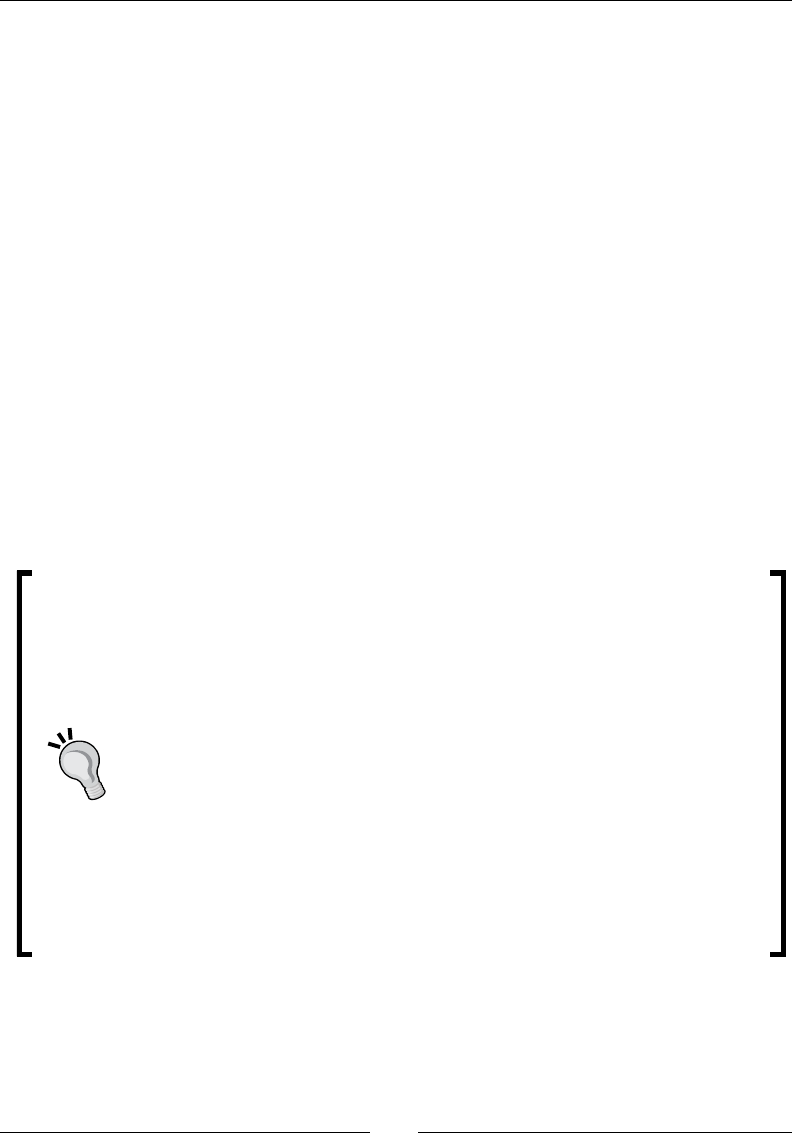

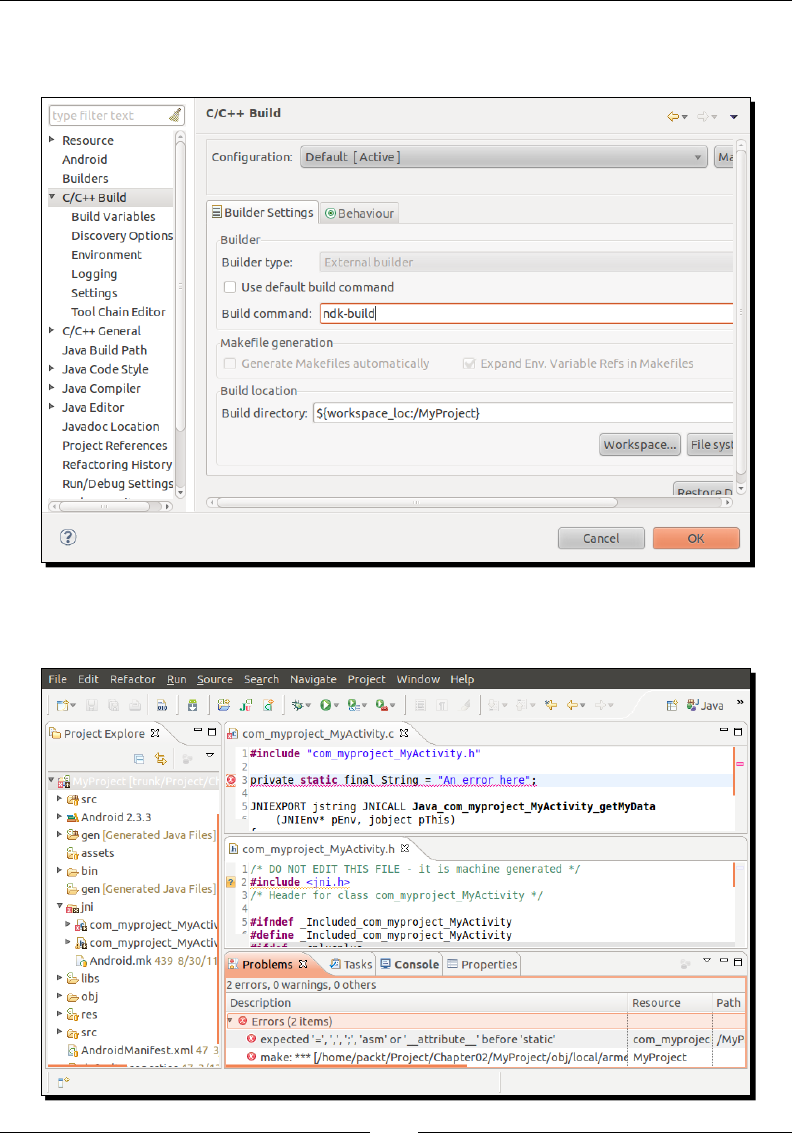

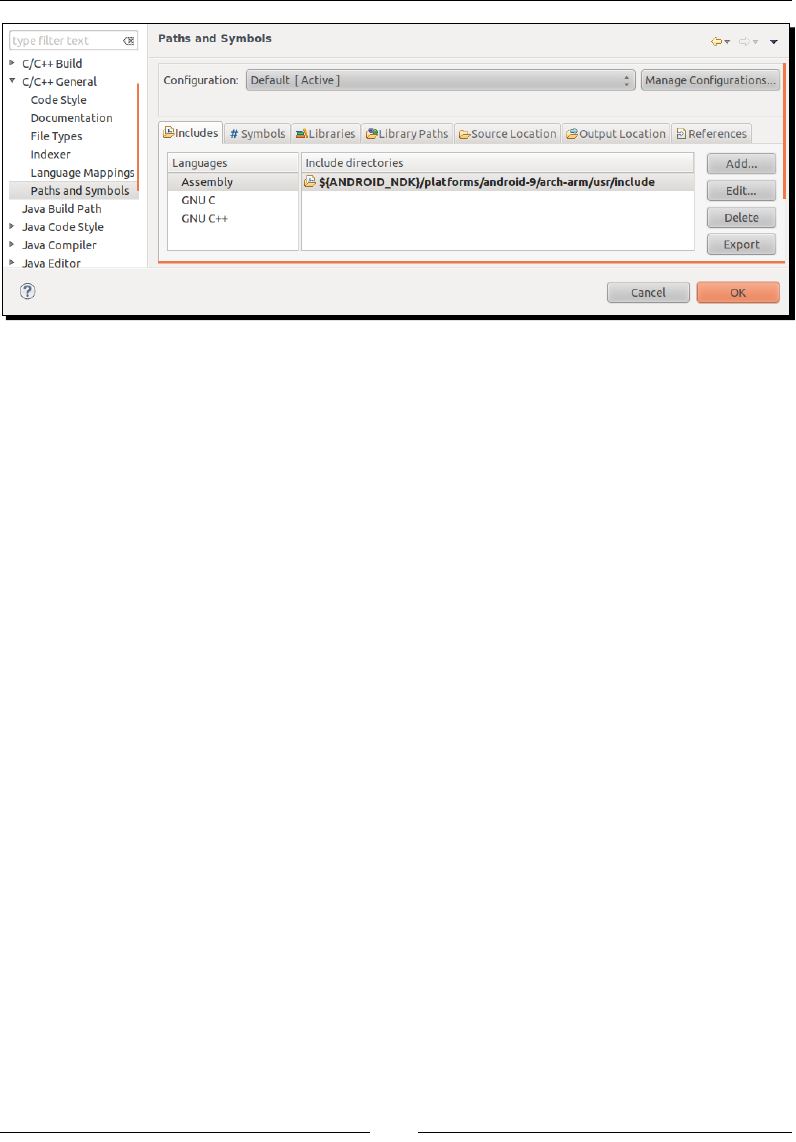

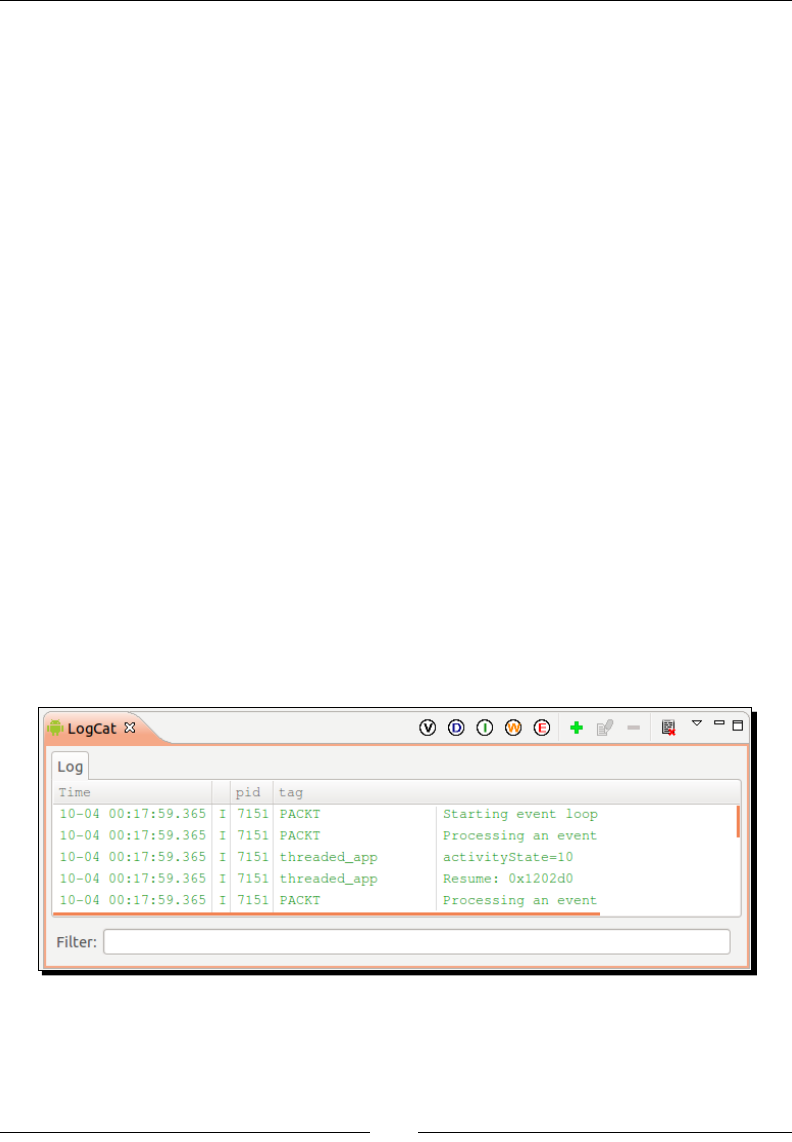

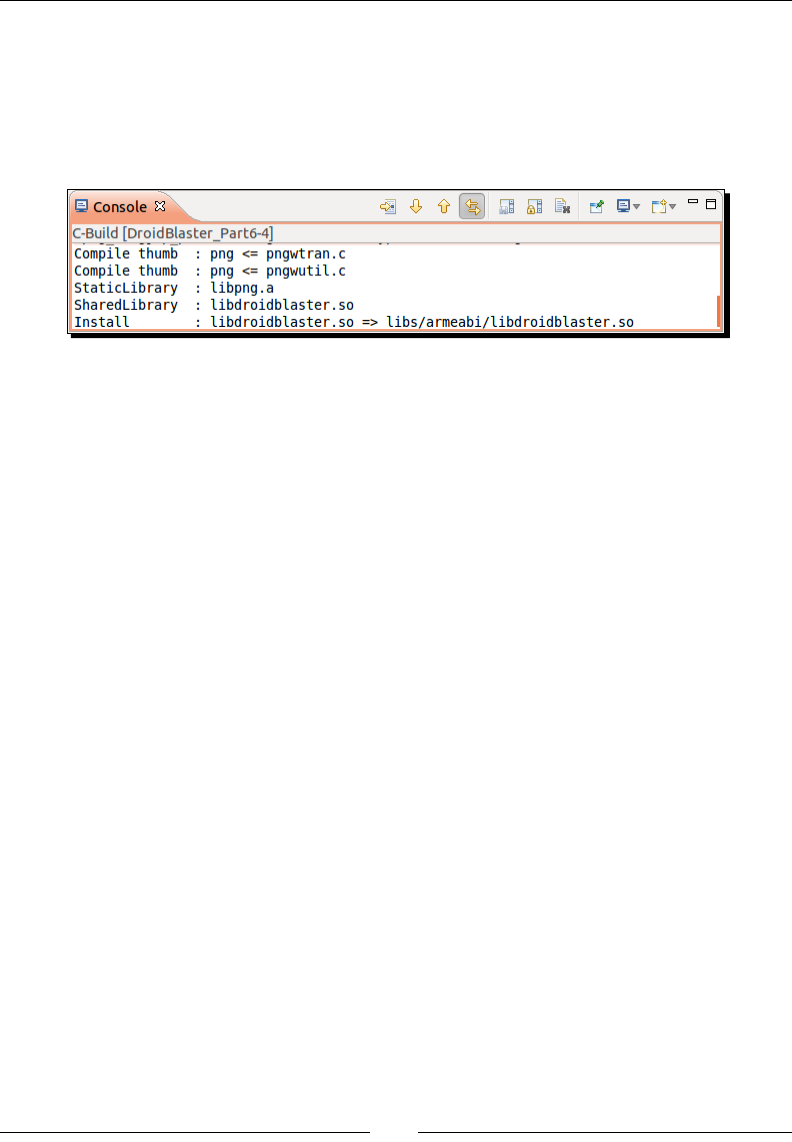

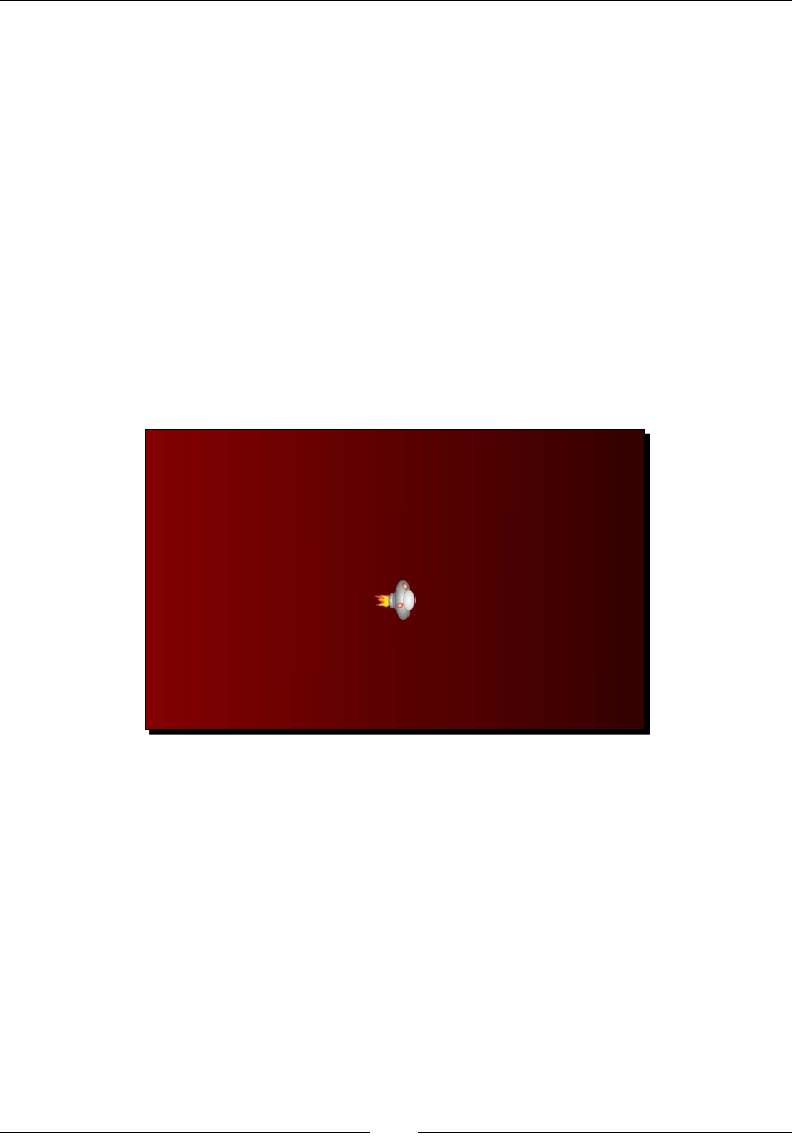

script around Make. Command ndk-build sets up the compilaon toolchain for