Autotools_02 Autotools A Practitioner's Guide To Autoconf, Automake And Libtool

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 364 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Brief Contents

- Contents in Detail

- Foreword

- Preface

- Introduction

- 1: A Brief Introduction to the GNU Autotools

- 2: Understanding the GNU Coding Standards

- Creating a New Project Directory Structure

- Project Structure

- Makefile Basics

- Creating a Source Distribution Archive

- Automatically Testing a Distribution

- Unit Testing, Anyone?

- Installing Products

- The Filesystem Hierarchy Standard

- Supporting Standard Targets and Variables

- Getting Your Project into a Linux Distro

- Build vs. Installation Prefix Overrides

- User Variables

- Configuring Your Package

- Summary

- 3: Configuring Your Project with Autoconf

- Autoconf Configuration Scripts

- The Shortest configure.ac File

- Comparing M4 to the C Preprocessor

- The Nature of M4 Macros

- Executing autoconf

- Executing configure

- Executing config.status

- Adding Some Real Functionality

- Generating Files from Templates

- Adding VPATH Build Functionality

- Let's Take a Breather

- An Even Quicker Start with autoscan

- Initialization and Package Information

- The Instantiating Macros

- Back to Remote Builds for a Moment

- Summary

- 4: More Fun with Autoconf: Configuring User Options

- 5: Automatic Makefiles with Automake

- 6: Building Libraries with Libtool

- 7: Library Interface Versioning and Runtime Dynamic Linking

- 8: FLAIM: An Autotools Example

- 9: FLAIM Part II: Pushing the Envelope

- 10: Using the M4 Macro Processor with Autoconf

- 11: A Catalog of Tips and Reusable Solutions for Creating Great Projects

- Item 1: Keeping Private Details out of Public Interfaces

- Item 2: Implementing Recursive Extension Targets

- Item 3: Using a Repository Revision Number in a Package Version

- Item 4: Ensuring Your Distribution Packages Are Clean

- Item 5: Hacking Autoconf Macros

- Item 6: Cross-Compiling

- Item 7: Emulating Autoconf Text Replacement Techniques

- Item 8: Using the ac-archive Project

- Item 9: Using pkg-config with Autotools

- Item 10: Using Incremental Installation Techniques

- Item 11: Using Generated Source Code

- Item 12: Disabling Undesirable Targets

- Item 13: Watch Those Tab Characters!

- Item 14: Packaging Choices

- Wrapping Up

- Index

www.nostarch.com

THE FINEST IN GEEK ENTERTAINMENT™

SHELVE IN:

COMPUTERS/PROGRAMMING

$44.95 ($56.95 CDN)

CREATING

PORTABLE

SOFTWARE JUST

GOT EASIER

CREATING

PORTABLE

SOFTWARE JUST

GOT EASIER

“I LIE FLAT.”

This book uses RepKover—a durable binding that won’t snap shut.

The GNU Autotools make it easy for developers to

create software that is portable across many Unix-like

operating systems. Although the Autotools are used

by thousands of open source software packages, they

have a notoriously steep learning curve. And good luck

to the beginner who wants to find anything beyond a

basic reference work online.

Autotools is the first book to offer programmers a tutorial-

based guide to the GNU build system. Author John

Calcote begins with an overview of high-level concepts

and a quick hands-on tour of the philosophy and design

of the Autotools. He then tackles more advanced details,

like using the M4 macro processor with Autoconf,

extending the framework provided by Automake, and

building Java and C# sources. He concludes the book

with detailed solutions to the most frequent problems

encountered by first-time Autotools users.

You’ll learn how to:

• Master the Autotools build system to maximize your

software’s portability

• Generate Autoconf configuration scripts to simplify

the compilation process

• Produce portable makefiles with Automake

• Build cross-platform software libraries with Libtool

• Write your own Autoconf macros

Autotools focuses on two projects: Jupiter, a simple

“Hello, world!” program, and FLAIM, an existing,

complex open source effort containing four separate but

interdependent subprojects. Follow along as the author

takes Jupiter’s build system from a basic makefile to a

full-fledged Autotools project, and then as he converts

the FLAIM projects from complex hand-coded makefiles

to the powerful and flexible GNU build system.

ABOUT THE AUTHOR

John Calcote is a senior software engineer and architect

at Novell, Inc. He’s been writing and developing portable

networking and system-level software for nearly 20 years

and is active in developing, debugging, and analyzing

diverse open source software packages. He is currently

a project administrator of the OpenSLP, OpenXDAS, and

DNX projects, as well as the Novell-sponsored FLAIM

database project.

AUTOTOOLS

AUTOTOOLS

A PRACTITIONER’S GUIDE TO

GNU AUTOCONF, AUTOMAKE, AND LIBTOOL

JOHN CALCOTE

CALCOTE

AUTOTOOLS

AUTOTOOLS

www.it-ebooks.info

AUTOTOOLS. Copyright © 2010 by John Calcote.

All rights reserved. No part of this work may be reproduced or transmitted in any form or by any means, electronic or

mechanical, including photocopying, recording, or by any information storage or retrieval system, without the prior

written permission of the copyright owner and the publisher.

14 13 12 11 10 1 2 3 4 5 6 7 8 9

ISBN-10: 1-59327-206-5

ISBN-13: 978-1-59327-206-7

Publisher: William Pollock

Production Editor: Ansel Staton

Cover and Interior Design: Octopod Studios

Developmental Editor: William Pollock

Technical Reviewer: Ralf Wildenhues

Copyeditor: Megan Dunchak

Compositor: Susan Glinert Stevens

Proofreader: Linda Seifert

Indexer: Nancy Guenther

For information on book distributors or translations, please contact No Starch Press, Inc. directly:

No Starch Press, Inc.

38 Ringold Street, San Francisco, CA 94103

phone: 415.863.9900; fax: 415.863.9950; info@nostarch.com; www.nostarch.com

Library of Congress Cataloging-in-Publication Data

Calcote, John, 1964-

Autotools : a practitioner's guide to GNU Autoconf, Automake, and Libtool / by John Calcote.

p. cm.

ISBN-13: 978-1-59327-206-7 (pbk.)

ISBN-10: 1-59327-206-5 (pbk.)

1. Autotools (Electronic resource) 2. Cross-platform software development. 3. Open source software.

4. UNIX (Computer file) I. Title.

QA76.76.D47C335 2010

005.3--dc22

2009040784

No Starch Press and the No Starch Press logo are registered trademarks of No Starch Press, Inc. Other product and

company names mentioned herein may be the trademarks of their respective owners. Rather than use a trademark

symbol with every occurrence of a trademarked name, we are using the names only in an editorial fashion and to the

benefit of the trademark owner, with no intention of infringement of the trademark.

The information in this book is distributed on an “As Is” basis, without warranty. While every precaution has been

taken in the preparation of this work, neither the author nor No Starch Press, Inc. shall have any liability to any

person or entity with respect to any loss or damage caused or alleged to be caused directly or indirectly by the

information contained in it.

Autotools_02.book Page iv Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

BRIEF CONTENTS

Foreword by Ralf Wildenhues..........................................................................................xv

Preface .......................................................................................................................xvii

Introduction ..................................................................................................................xxi

Chapter 1: A Brief Introduction to the GNU Autotools..........................................................1

Chapter 2: Understanding the GNU Coding Standards .....................................................19

Chapter 3: Configuring Your Project with Autoconf ...........................................................57

Chapter 4: More Fun with Autoconf: Configuring User Options ..........................................89

Chapter 5: Automatic Makefiles with Automake..............................................................119

Chapter 6: Building Libraries with Libtool .......................................................................145

Chapter 7: Library Interface Versioning and Runtime Dynamic Linking ...............................171

Chapter 8: FLAIM: An Autotools Example.......................................................................195

Chapter 9: FLAIM Part II: Pushing the Envelope...............................................................229

Chapter 10: Using the M4 Macro Processor with Autoconf ..............................................251

Chapter 11: A Catalog of Tips and Reusable Solutions for Creating Great Projects .............271

Index.........................................................................................................................313

Autotools_02.book Page vii Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

CONTENTS IN DETAIL

FOREWORD by Ralf Wildenhues xv

PREFACE xvii

Why Use the Autotools? .........................................................................................xviii

Acknowledgments ................................................................................................... xx

I Wish You the Very Best .......................................................................................... xx

INTRODUCTION xxi

Who Should Read This Book .................................................................................. xxii

How This Book Is Organized .................................................................................. xxii

Conventions Used in This Book ...............................................................................xxiii

Autotools Versions Used in This Book .......................................................................xxiii

1

A BRIEF INTRODUCTION TO THE GNU AUTOTOOLS 1

Who Should Use the Autotools? ................................................................................. 2

When Should You Not Use the Autotools? ................................................................... 2

Apple Platforms and Mac OS X ................................................................................. 3

The Choice of Language ........................................................................................... 4

Generating Your Package Build System ....................................................................... 5

Autoconf ................................................................................................................. 6

autoconf ........................................................................................................... 7

autoreconf ........................................................................................................ 7

autoheader ....................................................................................................... 7

autoscan ........................................................................................................... 7

autoupdate ....................................................................................................... 7

ifnames ............................................................................................................ 8

autom4te .......................................................................................................... 8

Working Together .............................................................................................. 8

Automake ................................................................................................................ 9

automake ....................................................................................................... 10

aclocal ........................................................................................................... 10

Libtool ................................................................................................................... 11

libtool ............................................................................................................. 12

libtoolize ........................................................................................................ 12

ltdl, the Libtool C API ........................................................................................ 12

Building Your Package ............................................................................................ 13

Running configure ............................................................................................ 13

Running make ................................................................................................. 15

Installing the Most Up-to-Date Autotools ..................................................................... 16

Summary ............................................................................................................... 18

Autotools_02.book Page ix Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

xContents in Detail

2

UNDERSTANDING THE GNU CODING STANDARDS 19

Creating a New Project Directory Structure ................................................................ 20

Project Structure ..................................................................................................... 21

Makefile Basics ...................................................................................................... 22

Commands and Rules ....................................................................................... 23

Variables ........................................................................................................ 24

A Separate Shell for Each Command ................................................................. 25

Variable Binding ............................................................................................. 26

Rules in Detail ................................................................................................. 27

Resources for Makefile Authors .......................................................................... 32

Creating a Source Distribution Archive ...................................................................... 32

Forcing a Rule to Run ....................................................................................... 34

Leading Control Characters .............................................................................. 35

Automatically Testing a Distribution .......................................................................... 36

Unit Testing, Anyone? ............................................................................................. 37

Installing Products ................................................................................................... 38

Installation Choices .......................................................................................... 40

Uninstalling a Package ..................................................................................... 41

Testing Install and Uninstall ............................................................................... 42

The Filesystem Hierarchy Standard ........................................................................... 44

Supporting Standard Targets and Variables .............................................................. 45

Standard Targets ............................................................................................. 46

Standard Variables .......................................................................................... 46

Adding Location Variables to Jupiter .................................................................. 47

Getting Your Project into a Linux Distro ..................................................................... 48

Build vs. Installation Prefix Overrides ........................................................................ 50

User Variables ....................................................................................................... 52

Configuring Your Package ...................................................................................... 54

Summary ............................................................................................................... 55

3

CONFIGURING YOUR PROJECT WITH AUTOCONF 57

Autoconf Configuration Scripts ................................................................................. 58

The Shortest configure.ac File .................................................................................. 59

Comparing M4 to the C Preprocessor ....................................................................... 60

The Nature of M4 Macros ....................................................................................... 60

Executing autoconf ................................................................................................. 61

Executing configure ................................................................................................ 62

Executing config.status ............................................................................................ 63

Adding Some Real Functionality ............................................................................... 64

Generating Files from Templates .............................................................................. 67

Adding VPATH Build Functionality ............................................................................ 68

Let’s Take a Breather .............................................................................................. 70

An Even Quicker Start with autoscan ........................................................................ 71

The Proverbial autogen.sh Script ........................................................................ 73

Updating Makefile.in ....................................................................................... 75

Initialization and Package Information ...................................................................... 76

AC_PREREQ ................................................................................................... 76

AC_INIT ......................................................................................................... 76

AC_CONFIG_SRCDIR ...................................................................................... 77

Autotools_02.book Page x Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

Contents in Detail xi

The Instantiating Macros ......................................................................................... 78

AC_CONFIG_HEADERS ................................................................................... 83

Using autoheader to Generate an Include File Template ....................................... 84

Back to Remote Builds for a Moment ......................................................................... 87

Summary ............................................................................................................... 88

4

MORE FUN WITH AUTOCONF:

CONFIGURING USER OPTIONS 89

Substitutions and Definitions .................................................................................... 90

AC_SUBST ...................................................................................................... 90

AC_DEFINE .................................................................................................... 91

Checking for Compilers .......................................................................................... 91

Checking for Other Programs .................................................................................. 93

A Common Problem with Autoconf ........................................................................... 95

Checks for Libraries and Header Files ....................................................................... 98

Is It Right or Just Good Enough? ....................................................................... 101

Printing Messages .......................................................................................... 106

Supporting Optional Features and Packages ........................................................... 107

Coding Up the Feature Option ........................................................................ 109

Formatting Help Strings .................................................................................. 112

Checks for Type and Structure Definitions ................................................................ 112

The AC_OUTPUT Macro ....................................................................................... 116

Summary ............................................................................................................. 117

5

AUTOMATIC MAKEFILES

WITH AUTOMAKE 119

Getting Down to Business ...................................................................................... 120

Enabling Automake in configure.ac .................................................................. 121

A Hidden Benefit: Automatic Dependency Tracking ........................................... 124

What’s in a Makefile.am File? ............................................................................... 125

Analyzing Our New Build System .......................................................................... 126

Product List Variables ..................................................................................... 127

Product Source Variables ................................................................................ 132

PLV and PSV Modifiers ................................................................................... 132

Unit Tests: Supporting make check .......................................................................... 133

Reducing Complexity with Convenience Libraries ..................................................... 134

Product Option Variables ................................................................................ 136

Per-Makefile Option Variables ......................................................................... 138

Building the New Library ....................................................................................... 138

What Goes into a Distribution? .............................................................................. 140

Maintainer Mode ................................................................................................. 141

Cutting Through the Noise ..................................................................................... 142

Summary ............................................................................................................. 144

Autotools_02.book Page xi Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

xii Contents in Detail

6

BUILDING LIBRARIES WITH LIBTOOL 145

The Benefits of Shared Libraries ............................................................................. 146

How Shared Libraries Work .................................................................................. 146

Dynamic Linking at Load Time ......................................................................... 147

Automatic Dynamic Linking at Runtime ............................................................. 148

Manual Dynamic Linking at Runtime ................................................................. 149

Using Libtool ........................................................................................................ 150

Abstracting the Build Process ........................................................................... 150

Abstraction at Runtime .................................................................................... 151

Installing Libtool ................................................................................................... 152

Adding Shared Libraries to Jupiter .......................................................................... 152

Using the LTLIBRARIES Primary ......................................................................... 153

Public Include Directories ................................................................................ 153

Customizing Libtool with LT_INIT Options .......................................................... 157

Reconfigure and Build .................................................................................... 161

So What Is PIC, Anyway? ............................................................................... 164

Fixing the Jupiter PIC Problem ......................................................................... 167

Summary ............................................................................................................. 170

7

LIBRARY INTERFACE VERSIONING AND

RUNTIME DYNAMIC LINKING 171

System-Specific Versioning .................................................................................... 172

Linux and Solaris Library Versioning ................................................................. 172

IBM AIX Library Versioning ............................................................................. 173

HP-UX/AT&T SVR4 Library Versioning .............................................................. 176

The Libtool Library Versioning Scheme .................................................................... 176

Library Versioning Is Interface Versioning .......................................................... 177

When Library Versioning Just Isn’t Enough ........................................................ 180

Using libltdl ......................................................................................................... 181

Necessary Infrastructure ................................................................................. 181

Adding a Plug-In Interface ............................................................................... 183

Doing It the Old-Fashioned Way ..................................................................... 184

Converting to Libtool’s ltdl Library .................................................................... 188

Preloading Multiple Modules ........................................................................... 192

Checking It All Out ........................................................................................ 193

Summary ............................................................................................................. 194

8

FLAIM: AN AUTOTOOLS EXAMPLE 195

What Is FLAIM? ................................................................................................... 196

Why FLAIM? ....................................................................................................... 196

An Initial Look ...................................................................................................... 197

Getting Started .................................................................................................... 199

Adding the configure.ac Files .......................................................................... 199

The Top-Level Makefile.am File ........................................................................ 202

The FLAIM Subprojects .......................................................................................... 204

The FLAIM Toolkit configure.ac File .................................................................. 205

The FLAIM Toolkit Makefile.am File .................................................................. 212

Autotools_02.book Page xii Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

Contents in Detail xiii

Designing the ftk/src/Makefile.am File ............................................................. 215

Moving On to the ftk/util Directory .................................................................. 217

Designing the XFLAIM Build System ........................................................................ 218

The XFLAIM configure.ac File .......................................................................... 219

Creating the xflaim/src/Makefile.am File ......................................................... 222

Turning to the xflaim/util Directory ................................................................... 223

Summary ............................................................................................................. 227

9

FLAIM PART II: PUSHING THE ENVELOPE 229

Building Java Sources Using the Autotools ............................................................... 230

Autotools Java Support ................................................................................... 230

Using ac-archive Macros ................................................................................ 233

Canonical System Information ......................................................................... 234

The xflaim/java Directory Structure .................................................................. 234

The xflaim/src/Makefile.am File ...................................................................... 235

Building the JNI C++ Sources .......................................................................... 236

The Java Wrapper Classes and JNI Headers ..................................................... 237

A Caveat About Using the JAVA Primary .......................................................... 239

Building the C# Sources ........................................................................................ 239

Manual Installation ........................................................................................ 242

Cleaning Up Again ........................................................................................ 243

Configuring Compiler Options ............................................................................... 243

Hooking Doxygen into the Build Process ................................................................. 245

Adding Nonstandard Targets ................................................................................ 247

Summary ............................................................................................................. 250

10

USING THE M4 MACRO PROCESSOR WITH AUTOCONF 251

M4 Text Processing .............................................................................................. 252

Defining Macros ............................................................................................ 253

Macros with Arguments .................................................................................. 255

The Recursive Nature of M4 .................................................................................. 256

Quoting Rules ............................................................................................... 258

Autoconf and M4 ................................................................................................. 259

The Autoconf M4 Environment ......................................................................... 260

Writing Autoconf Macros ...................................................................................... 260

Simple Text Replacement ................................................................................ 260

Documenting Your Macros .............................................................................. 263

M4 Conditionals ............................................................................................ 264

Diagnosing Problems ............................................................................................ 268

Summary ............................................................................................................. 269

11

A CATALOG OF TIPS AND REUSABLE SOLUTIONS

FOR CREATING GREAT PROJECTS 271

Item 1: Keeping Private Details out of Public Interfaces .............................................. 272

Solutions in C ................................................................................................ 273

Solutions in C++ ............................................................................................ 273

Autotools_02.book Page xiii Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

xiv Contents in Detail

Item 2: Implementing Recursive Extension Targets ..................................................... 276

Item 3: Using a Repository Revision Number in a Package Version ............................. 279

Item 4: Ensuring Your Distribution Packages Are Clean ............................................. 281

Item 5: Hacking Autoconf Macros .......................................................................... 282

Providing Library-Specific Autoconf Macros ....................................................... 287

Item 6: Cross-Compiling ........................................................................................ 287

Item 7: Emulating Autoconf Text Replacement Techniques .......................................... 293

Item 8: Using the ac-archive Project ........................................................................ 298

Item 9: Using pkg-config with Autotools .................................................................. 299

Providing pkg-config Files for Your Library Projects ............................................. 300

Using pkg-config Files in configure.ac .............................................................. 301

Item 10: Using Incremental Installation Techniques ................................................... 302

Item 11: Using Generated Source Code .................................................................. 302

Using the BUILT_SOURCES Variable ................................................................ 302

Dependency Management .............................................................................. 303

Built Sources Done Right ................................................................................. 306

Item 12: Disabling Undesirable Targets ................................................................... 309

Item 13: Watch Those Tab Characters! ................................................................... 310

Item 14: Packaging Choices .................................................................................. 311

Wrapping Up ...................................................................................................... 312

INDEX 313

Autotools_02.book Page xiv Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

FOREWORD

When I was asked to do a technical review on a book

about the Autotools, I was rather skeptical. Several

online tutorials and a few books already introduce

readers to the use of GNU Autoconf, Automake, and

Libtool. However, many of these texts are less than ideal in at least some

ways: They were either written several years ago and are starting to show their

age, contain at least some inaccuracies, or tend to be incomplete for typical

beginner’s tasks. On the other hand, the GNU manuals for these programs

are fairly large and rather technical, and as such, they may present a signifi-

cant entry barrier to learning your ways around the Autotools.

John Calcote began this book with an online tutorial that shared at least

some of the problems facing other tutorials. Around that time, he became a

regular contributor to discussions on the Autotools mailing lists, too. John

kept asking more and more questions, and discussions with him uncovered

some bugs in the Autotools sources and documentation, as well as some

issues in his tutorial.

Autotools_02.book Page xv Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

xvi Foreword

Since that time, John has reworked the text a lot. The review uncovered

several more issues in both software and book text, a nice mutual benefit. As

a result, this book has become a great introductory text that still aims to be

accurate, up to date with current Autotools, and quite comprehensive in a

way that is easily understood.

Always going by example, John explores the various software layers, port-

ability issues and standards involved, and features needed for package build

development. If you’re new to the topic, the entry path may just have become

a bit less steep for you.

Ralf Wildenhues

Bonn, Germany

June 2010

Autotools_02.book Page xvi Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

PREFACE

I’ve often wondered during the last ten years how it

could be that the only third-party book on the GNU

Autotools that I’ve been able to discover is GNU

AUTOCONF, AUTOMAKE, and LIBTOOL by Gary

Vaughan, Ben Elliston, Tom Tromey, and Ian Lance

Taylor, affectionately known by the community as

The Goat Book (so dubbed for the front cover—an old-

fashioned photo of goats doing acrobatic stunts).1

I’ve been told by publishers that there is simply no market for such a

book. In fact, one editor told me that he himself had tried unsuccessfully to

entice authors to write this book a few years ago. His authors wouldn’t finish

the project, and the publisher’s market analysis indicated that there was very

little interest in the book. Publishers believe that open source software devel-

opers tend to disdain written documentation. Perhaps they’re right. Interest-

ingly, books on IT utilities like Perl sell like Perl’s going out of style—which is

actually somewhat true these days—and yet people are still buying enough

1. Vaughan, Elliston, Tromey, and Taylor, GNU Autoconf, Automake, and Libtool

(Indianapolis: Sams Publishing, 2000).

Autotools_02.book Page xvii Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

xviii Preface

Perl books to keep their publishers happy. All of this explains why there are

ten books on the shelf with animal pictures on the cover for Perl, but literally

nothing for open source software developers.

I’ve worked in software development for 25 years, and I’ve used open

source software for quite some time now. I’ve learned a lot about open source

software maintenance and development, and most of what I’ve learned,

unfortunately, has been by trial and error. Existing GNU documentation is

more often reference material than solution-oriented instruction. Had there

been other books on the topic, I would have snatched them all up immediately.

What we need is a cookbook-style approach with the recipes covering

real problems found in real projects. First the basics are covered, sauces and

reductions, followed by various cooking techniques. Finally, master recipes

are presented for culinary wonders. As each recipe is mastered, the reader

makes small intuitive leaps—I call them minor epiphanies. Put enough of these

under your belt and overall mastery of the Autotools is ultimately inevitable.

Let me give you an analogy. I’d been away from math classes for about

three years when I took my first college calculus course. I struggled the entire

semester with little progress. I understood the theory, but I had trouble

with the homework. I just didn’t have the background I needed. So the

next semester, I took college algebra and trigonometry back to back as half-

semester classes. At the end of that semester, I tried calculus again. This time

I did very well—finishing the class with a solid A grade. What was missing the

first time? Just basic math skills. You’d think it wouldn’t have made that much

difference, but it really does.

The same concept applies to learning to properly use the Autotools. You

need a solid understanding of the tools upon which the Autotools are built

in order to become proficient with the Autotools themselves.

Why Use the Autotools?

In the early 1990s, I was working on the final stages of my bachelor’s degree

in computer science at Brigham Young University. I took an advanced com-

puter graphics class where I was introduced to C++ and the object-oriented

programming paradigm. For the next couple of years, I had a love-hate rela-

tionship with C++. I was a pretty good C coder by that time, and I thought I

could easily pick up C++, as close in syntax as it was to C. How wrong I was!

I fought with the C++ compiler more often than I’d care to recall.

The problem was that the most fundamental differences between C

and C++ are not obvious to the casual observer, because they’re buried

deep within the C++ language specification rather than on the surface in

the language syntax. The C++ compiler generates an amazing amount of

code beneath the covers, providing functionality in a few lines of C++ code

that require dozens of lines of C code.

Just as programmers then complained of their troubles with C++, so like-

wise programmers today complain about similar difficulties with the GNU

Autotools. The differences between make and Automake are very similar to

the differences between C and C++. The most basic single-line Makefile.am

Autotools_02.book Page xviii Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

Preface xix

generates a Makefile.in (an Autoconf template) containing 300–400 lines of

parameterized make script, and it tends to increase with each revision of the

tool as more features are added.

Thus, when you use the Autotools, you have to understand the under-

lying infrastructure managed by these tools. You need to take the time to

understand the open source software distribution, build, test, and installa-

tion philosophies embodied by—in many cases even enforced by—these

tools, or you’ll find yourself fighting against the system. Finally, you need to

learn to agree with these basic philosophies because you’ll only become frus-

trated if you try to make the Autotools operate outside of the boundaries set

by their designers.

Source-level distribution relegates to the end user a particular portion

of the responsibility of software development that has traditionally been

assumed by the software developer—namely, building products from source

code. But end users are often not developers, so most of them won’t know

how to properly build the package. The solution to this problem, from the

earliest days of the open source movement, has been to make the package

build and installation processes as simple as possible for the end user so that

he could perform a few well-understood steps to have the package built and

installed cleanly on his system.

Most packages are built using the make utility. It’s very easy to type make,

but that’s not the problem. The problem crops up when the package doesn’t

build successfully because of some unanticipated difference between the user’s

system and the developer’s system. Thus was born the ubiquitous configure

script—initially a simple shell script that configured the end user’s environ-

ment so that make could successfully find the required external resources

on the user’s system. Hand-coded configuration scripts helped, but they

weren’t the final answer. They fixed about 65 percent of the problems result-

ing from system configuration differences—and they were a pain in the neck

to write properly and to maintain. Dozens of changes were made incremen-

tally over a period of years, until the script worked properly on most of the

systems anyone cared about. But the entire process was clearly in need of an

upgrade.

Do you have any idea of the number of build-breaking differences there

are between existing systems today? Neither do I, but there are a handful of

developers in the world who know a large percentage of these differences.

Between them and the open source software community, the GNU Autotools

were born. The Autotools were designed to create configuration scripts and

makefiles that work correctly and provide significant chunks of valuable

end-user functionality under most circumstances, and on most systems—

even on systems not initially considered (or even conceived of) by the pack-

age maintainer.

With this in mind, the primary purpose of the Autotools is not to make

life simpler for the package maintainer (although it really does in the long

run). The primary purpose of the Autotools is to make life simpler for the end user.

Autotools_02.book Page xix Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

xx Preface

Acknowledgments

I could not have written a technical book like this without the help of a lot of

people. I would like to thank Bill Pollock and the editors and staff at No Starch

Press for their patience with a first-time author. They made the process inter-

esting and fun (and a little painful at times).

Additionally, I’d like to thank the authors and maintainers of the GNU

Autotools for giving the world a standard to live up to and a set of tools that

make it simpler to do so. Specifically, I’d like to thank Ralf Wildenhues, who

believed in this project enough to spend hundreds of hours of his personal

time in technical review. His comments and insight were invaluable in taking

this book from mere wishful thinking to an accurate and useful text.

I would also like to thank my friend Cary Petterborg for encouraging me

to “just go ahead and do it,” when I told him it would probably never happen.

Finally, I’d like to thank my wife Michelle and my children: Ethan,

Mason, Robby, Haley, Joey, Nick, and Alex for allowing me to spend all of

that time away from them while I worked on the book. A novel would have

been easier (and more lucrative), but the world has plenty of novels and not

enough books about the Autotools.

I Wish You the Very Best

I spent a long time and a lot of effort learning what I now know about the

Autotools. Most of this learning process was more painful than it really had

to be. I’ve written this book so that you won’t have to struggle to learn what

should be a core set of tools for the open source programmer. Please feel

free to contact me, and let me know your experiences with learning the

Autotools. I can be reached at my personal email address at john.calcote

@gmail.com. Good luck in your quest for a better software development

experience!

John Calcote

Elk Ridge, Utah

June 2010

Autotools_02.book Page xx Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

INTRODUCTION

Few software developers would deny that

GNU Autoconf, Automake, and Libtool

(the Autotools) have revolutionized the open

source software world. But while there are many

thousands of Autotools advocates, there are also many

developers who hate the Autotools—with a passion.

The reason for this dread of the Autotools, I think, is that when you use the

Autotools, you have to understand the underlying infrastructure that they

manage. Otherwise, you’ll find yourself fighting against the system.

This book solves this problem by first providing a framework for under-

standing the underlying infrastructure of the Autotools and then building

on that framework with a tutorial-based approach to teaching Autotools

concepts in a logically ordered fashion.

Autotools_02.book Page xxi Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

xxii Introduction

Who Should Read This Book

This book is for the open source software package maintainer who wants to

become an Autotools expert. Existing material on the subject is limited to

the GNU Autotools manuals and a few Internet-based tutorials. For years

most real-world questions have been answered on the Autotools mailing lists,

but mailing lists are an inefficient form of teaching because the same answers

to the same questions are given time and again. This book provides a cook-

book style approach, covering real problems found in real projects.

How This Book Is Organized

This book moves from high-level concepts to mid-level use cases and examples

and then finishes with more advanced details and examples. As though we

were learning arithmetic, we’ll begin with some basic math—algebra and

trigonometry—and then move on to analytical geometry and calculus.

Chapter 1 presents a general overview of the packages that are consid-

ered part of the GNU Autotools. This chapter describes the interaction

between these packages and the files consumed by and generated by each

one. In each case, figures depict the flow of data from hand-coded input to

final output files.

Chapter 2 covers open source software project structure and organiza-

tion. This chapter also goes into some detail about the GNU Coding Standards

(GCS) and the Filesystem Hierarchy Standard (FHS), both of which have played

vital roles in the design of the GNU Autotools. It presents some fundamental

tenets upon which the design of each of the Autotools is based. With these

concepts, you’ll better understand the theory behind the architectural deci-

sions made by the Autotools designers.

In this chapter, we’ll also design a simple project, Jupiter, from start to

finish using hand-coded makefiles. We’ll add to Jupiter in a stepwise fashion

as we discover functionality that we can use to simplify tasks.

Chapters 3 and 4 present the framework designed by the GNU Autoconf

engineers to ease the burden of creating and maintaining portable, func-

tional project configuration scripts. The GNU Autoconf package provides

the basis for creating complex configuration scripts with just a few lines of

information provided by the project maintainer.

In these chapters, we’ll quickly convert our hand-coded makefiles into

Autoconf Makefile.in templates and then begin adding to them in order to

gain some of the most significant Autoconf benefits. Chapter 3 discusses the

basics of generating configuration scripts, while Chapter 4 moves on to more

advanced Autoconf topics, features, and uses.

Chapter 5 discusses converting the Jupiter project Makefile.in templates

into Automake Makefile.am files. Here you’ll discover that Automake is to

makefiles what Autoconf is to configuration scripts. This chapter presents

the major features of Automake in a manner that will not become outdated

as new versions of Automake are released.

Autotools_02.book Page xxii Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

Introduction xxiii

Chapters 6 and 7 explain basic shared-library concepts and show how

to build shared libraries with Libtool—a stand-alone abstraction for shared

library functionality that can be used with the other Autotools. Chapter 6

begins with a shared-library primer and then covers some basic Libtool

extensions that allow Libtool to be a drop-in replacement for the more

basic library generation functionality provided by Automake. Chapter 7

covers library versioning and runtime dynamic module management fea-

tures provided by Libtool.

Chapters 8 and 9 show the transformation of an existing, fairly complex,

open source project (FLAIM) from using a hand-built build system to using

an Autotools build system. This example will help you to understand how you

might autoconfiscate one of your own existing projects.

Chapter 10 provides an overview of the features of the M4 macro proces-

sor that are relevant to obtaining a solid understanding of Autoconf. This

chapter also considers the process of writing your own Autoconf macros.

Chapter 11 is a compilation of tips, tricks, and reusable solutions to

Autoconf problems. The solutions in this chapter are presented as a set of

individual topics or items. Each item can be understood without context

from the surrounding items.

Most of the examples shown in listings in this book are available for

download from http://www.nostarch.com/autotools.htm.

Conventions Used in This Book

This book contains hundreds of program listings in roughly two categories:

console examples and file listings. Console examples have no captions, and

their commands are bolded. File listings contain full or partial listings of the

files discussed in the text. All named listings are provided in the download-

able archive. Listings without filenames are entirely contained in the printed

listing itself. In general, bolded text in listings indicates changes made to a

previous version of that listing.

For listings related to the Jupiter and FLAIM projects, the caption speci-

fies the path of the file relative to the project root directory.

Throughout this book, I refer to the GNU/Linux operating system sim-

ply as Linux. It should be understood that by the use of the term Linux, I’m

referring to GNU/Linux, its actual official name. I use Linux simply as short-

hand for the official name.

Autotools Versions Used in This Book

The Autotools are always being updated—on average, a significant update of

each of the three tools, Autoconf, Automake, and Libtool, is released every

year and a half, and minor updates are released every three to six months.

The Autotools designers attempt to maintain a reasonable level of backward

compatibility with each new release, but occasionally something significant is

broken, and older documentation simply becomes out of date.

Autotools_02.book Page xxiii Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

xxiv Introduction

While I describe new significant features of recent releases of the Auto-

tools, in my efforts to make this a more timeless work, I’ve tried to stick to

descriptions of Autoconf features (macros for instance) that have been in

widespread use for several years. Minor details change occasionally, but the

general use has stayed the same through many releases.

At appropriate places in the text, I mention the versions of the Autotools

that I’ve used for this book, but I’ll summarize here. I’ve used version 2.64 of

Autoconf, version 1.11 of Automake, and version 2.2.6 of Libtool. These were

the latest versions as of this writing, and even through the publication pro-

cess, I was able to make minor corrections and update to new releases as they

became available.

Autotools_02.book Page xxiv Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

A BRIEF INTRODUCTION

TO THE GNU AUTOTOOLS

We shall not cease from exploration

And the end of all our exploring

Will be to arrive where we started

And know the place for the first time.

—T.S. Eliot, “Quartet No. 4: Little Gidding”

As stated in the preface to this book, the

purpose of the GNU Autotools is to make

life simpler for the end user, not the main-

tainer. Nevertheless, using the Autotools will

make your job as a project maintainer easier in the

long run, although maybe not for the reasons you suspect. The Autotools

framework is as simple as it can be, given the functionality it provides. The

real purpose of the Autotools is twofold: it serves the needs of your users, and

it makes your project incredibly portable—even to systems on which you’ve

never tested, installed, or built your code.

Throughout this book, I will often use the term Autotools, although you

won’t find a package in the GNU archives with this label. I use this term to

signify the following three GNU packages, which are considered by the com-

munity to be part of the GNU build system:

zAutoconf, which is used to generate a configuration script for a project

zAutomake, which is used to simplify the process of creating consistent

and functional makefiles

zLibtool, which provides an abstraction for the portable creation of

shared libraries

Autotools_02.book Page 1 Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

2Chapter 1

Other build tools, such as the open source packages CMake and SCons,

attempt to provide the same functionality as the Autotools but in a more

user-friendly manner. However, the functionality these tools attempt to hide

behind GUI interfaces and script builders actually ends up making them less

functional.

Who Should Use the Autotools?

If you’re writing open source software that targets Unix or Linux systems, you

should absolutely be using the GNU Autotools, and even if you’re writing

proprietary software for Unix or Linux systems, you’ll still benefit significantly

from using them. The Autotools provide you with a build environment that

will allow your project to build successfully on future versions or distributions

with virtually no changes to the build scripts. This is useful even if you only

intend to target a single Linux distribution, because—let’s be honest—you

really can’t know in advance whether or not your company will want your soft-

ware to run on other platforms in the future.

When Should You Not Use the Autotools?

About the only time it makes sense not to use the Autotools is when you’re

writing software that will only run on non-Unix platforms, such as Microsoft

Windows. Although the Autotools have limited support for building Windows

software, it’s my opinion that the POSIX/FHS runtime environment embraced

by these tools is just too different from the Windows runtime environment to

warrant trying to shoehorn a Windows project into the Autotools paradigm.

Autotools support for Windows requires a Cygwin1 or MSYS2 environment

in order to work correctly, because Autoconf-generated configuration scripts

are Bourne-shell scripts, and Windows doesn’t provide a native Bourne shell.

Unix and Microsoft tools are just different enough in command-line options

and runtime characteristics that it’s often simpler to use Windows ports of GNU

tools, such as GCC or MinGW, to build Windows programs with an Autotools

build system.

I’ve seen truly portable build systems that use these environments and

tool sets to build Windows software using Autotools scripts that are common

between Windows and Unix. The shim libraries provided by portability envi-

ronments like Cygwin make the Windows operating system look POSIX enough

to pass for Unix in a pinch, but they sacrifice performance and functionality for

the sake of portability. The MinGW approach is a little better in that it targets the

native Windows API. In any case, these sorts of least-common-denominator

approaches merely serve to limit the possibilities of your code on Windows.

I’ve also seen developers customize the Autotools to generate build scripts

that use native (Microsoft) Windows tools. These people spend much of their

time tweaking their build systems to do things they were never intended to

do, in a hostile and foreign environment. Their makefiles contain entirely

1. Cygwin Information and Installation, http://www.cygwin.com/.

2. MinGW and MSYS, Minimalist GNU for Windows, http://www.mingw.org/.

Autotools_02.book Page 2 Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

A Brief Introduction to the GNU Autotools 3

different sets of functionality based on the target and host operating systems:

one set of code to build a project on Windows and another to build on

POSIX systems. This does not constitute a portable build system; it only por-

trays the vague illusion of one.

For these reasons, I focus exclusively in this book on using the Autotools

on POSIX-compliant platforms.

NOTE I’m not a typical Unix bigot. While I love Unix (and especially Linux), I also appreciate

Windows for the areas in which it excels.3 For Windows development, I highly recommend

using Microsoft tools. The original reasons for using GNU tools to develop Windows

programs are more or less academic nowadays, because Microsoft has made the better

part of its tools available for download at no cost. (For download information, see

Microsoft Express at http://www.microsoft.com/Express.)

Apple Platforms and Mac OS X

The Macintosh operating system has been POSIX compliant since 2002 when

Mac OS version 10 (OS X) was released. OS X is derived from NeXTSTEP/

OpenStep, which is based on the Mach kernel, with parts taken from FreeBSD

and NetBSD. As a POSIX-compliant operating system, OS X provides all the

infrastructure required by the Autotools. The problems you’ll encounter with

OS X will mostly likely involve Apple’s user interface and package-management

systems, both of which are specific to the Mac.

The user interface presents the same issues you encounter when dealing

with X Windows on other Unix platforms, and then some. The primary dif-

ference is that X Windows is used exclusively on most Unix systems, but Mac

OS has its own graphical user interface called Cocoa. While X Windows can be

used on the Mac (Apple provides a window manager that makes X applications

look a lot like native Cocoa apps), Mac programmers will sometimes wish to

take full advantage of the native user interface features provided by the oper-

ating system.

The Autotools skirt the issue of package management differences between

Unix platforms by simply ignoring it. They create packages that are little more

than compressed archives using the tar and gzip utilities, and they install and

uninstall products from the make command line. The Mac OS package manage-

ment system is an integral part of installing an application on an Apple system

and projects like Fink (http://www.finkproject.org/) and MacPorts (http://

www.macports.org/) help make existing open source packages available on the

Mac by providing simplified mechanisms for converting Autotools packages

into installable Mac packages.

The bottom line is that the Autotools can be used quite effectively on

Apple Macintosh systems running OS X or later, as long as you keep these

caveats in mind.

3. Hard core gamers will agree with me, I’m sure. I’m writing this book on a laptop running

Windows 7, but I’m using OpenOffice.org as my text editor, and I’m writing the book’s sample

code on my 3GHz 64-bit dual processor Opensuse 11.2 Linux workstation.

Autotools_02.book Page 3 Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

4Chapter 1

The Choice of Language

Your choice of programming language is another important factor to consider

when deciding whether to use the Autotools. Remember that the Autotools

were designed by GNU people to manage GNU projects. In the GNU com-

munity, there are two factors that determine the importance of a computer

programming language:

zAre there any GNU packages written in the language?

zDoes the GNU compiler toolset support the language?

Autoconf provides native support for the following languages based on

these two criteria (by native support, I mean that Autoconf will compile, link,

and run source-level feature checks in these languages):

zC

zC++

zObjective C

zFortran

zFortran 77

zErlang

Therefore, if you want to build a Java package, you can configure Auto-

make to do so (as we’ll see in Chapters 8 and 9), but you can’t ask Autoconf

to compile, link, or run Java-based checks,4 because Autoconf simply doesn’t

natively support Java. However, you can find Autoconf macros (which I will

cover in more detail in later chapters) that enhance Autoconf’s ability to

manage the configuration process for projects written in Java.

Open source software developers are actively at work on the gcj compiler

and toolset, so some native Java support may ultimately be added to Autoconf.

But as of this writing, gcj is still a bit immature, and very few GNU packages are

currently written in Java, so the issue is not yet critical to the GNU community.

Rudimentary support does exist in Automake for both GNU (gcj) and

non-GNU Java compilers and JVMs. I’ve used these features myself on projects

and they work well, as long as you don’t try to push them too far.

If you’re into Smalltalk, ADA, Modula, Lisp, Forth, or some other non-

mainstream language, you’re probably not too interested in porting your code

to dozens of platforms and CPUs. However, if you are using a non-mainstream

language, and you’re concerned about the portability of your build systems,

consider adding support for your language to the Autotools yourself. This

is not as daunting a task as you may think, and I guarantee that you’ll be an

Autotools expert when you’re finished.5

4. This statement is not strictly true: I’ve seen third-party macros that use the JVM to execute

Java code within checks, but these are usually very special cases. None of the built-in Autoconf

checks rely on a JVM in any way. Chapters 8 and 9 outline how you might use a JVM in an Autoconf

check. Additionally, the portable nature of Java and the Java virtual machine specification make

it fairly unlikely that you’ll need to perform a Java-based Autoconf check in the first place.

5. For example, native Erlang support made it into the Autotools because members of the

Erlang community thought it was important enough to add it themselves.

Autotools_02.book Page 4 Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

A Brief Introduction to the GNU Autotools 5

Generating Your Package Build System

The GNU Autotools framework includes three main packages: Autoconf,

Automake, and Libtool. The tools in these packages can generate code that

depends on utilities and functionality from the gettext, m4, sed, make, and perl

packages, among others.

With respect to the Autotools, it’s important to distinguish between a

maintainer’s system and an end user’s system. The design goals of the Autotools

specify that an Autotools-generated build system should rely only on tools

that are readily available and preinstalled on the end user’s machine. For

example, the machine a maintainer uses to create distributions requires a

Perl interpreter, but a machine on which an end-user builds products from

release distribution packages should not require Perl.

A corollary is that an end user’s machine doesn’t need to have the Autotools

installed—an end user’s system only requires a reasonably POSIX-compliant

version of make and some variant of the Bourne shell that can execute the

generated configuration script. And, of course, any package will also require

compilers, linkers, and other tools deemed necessary by the project maintainer

to convert source files into executable binary programs, help files, and other

runtime resources.

If you’ve ever downloaded, built, and installed software from a tarball—a

compressed archive with a .tar.gz, .tgz, .tar.bz2, or other such extension—you’re

undoubtedly aware of the general process. It usually looks something like this:

$ gzip -cd hackers-delight-1.0.tar.gz | tar xvf -

...

$ cd hackers-delight-1.0

$ ./configure && make

...

$ sudo make install

...

NOTE If you’ve performed this sequence of commands, you probably know what they mean,

and you have a basic understanding of the software development process. If this is the

case, you’ll have no trouble following the content of this book.

Most developers understand the purpose of the make utility, but what’s the

point of configure? While Unix systems have followed the de facto standard

Unix kernel interface for decades, most software has to stretch beyond these

boundaries.

Originally, configuration scripts were hand-coded shell scripts designed

to set variables based on platform-specific characteristics. They also allowed

users to configure package options before running make. This approach worked

well for decades, but as the number of Linux distributions and custom Unix sys-

tems grew, the variety of features and installation and configuration options

exploded, so it became very difficult to write a decent portable configuration

script. In fact, it was much more difficult to write a portable configuration script

than it was to write makefiles for a new project. Therefore, most people just

Autotools_02.book Page 5 Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

6Chapter 1

created configuration scripts for their projects by copying and modifying the

script for a similar project.

In the early 1990s, it was apparent to many open source software devel-

opers that project configuration would become painful if something wasn’t

done to ease the burden of writing massive shell scripts to manage configura-

tion options. The number of GNU project packages had grown to hundreds,

and maintaining consistency between their separate build systems had become

more time consuming than simply maintaining the code for these projects.

These problems had to be solved.

Autoconf

Autoconf6 changed this paradigm almost overnight. David MacKenzie started

the Autoconf project in 1991, but a look at the AUTHORS file in the Savannah

Autoconf project7 repository will give you an idea of the number of people

that had a hand in making the tool. Although configuration scripts were long

and complex, users only needed to specify a few variables when executing

them. Most of these variables were simply choices about components, features,

and options, such as: Where can the build system find libraries and header files?

Where do I want to install my finished products? Which optional components do I

want to build into my products?

Instead of modifying and debugging hundreds of lines of supposedly

portable shell script, developers can now write a short meta-script file using a

concise, macro-based language, and Autoconf will generate a perfect config-

uration script that is more portable, more accurate, and more maintainable

than a hand-coded one. In addition, Autoconf often catches semantic or logic

errors that could otherwise take days to debug. Another benefit of Autoconf

is that the shell code it generates is portable between most variations of the

Bourne shell. Mistakes made in portability between shells are very common,

and, unfortunately, are the most difficult kinds of mistakes to find, because

no one developer has access to all Bourne-like shells.

NOTE While scripting languages like Perl and Python are now more pervasive than the Bourne

shell, this was not the case when the idea for Autoconf was first conceived.

Autoconf-generated configuration scripts provide a common set of options

that are important to all portable software projects running on POSIX systems.

These include options to modify standard locations (a concept I’ll cover in

more detail in Chapter 2), as well as project-specific options defined in the

configure.ac file (which I’ll discuss in Chapter 3).

The autoconf package provides several programs, including the following:

zautoconf

zautoheader

zautom4te

6. For more on Autoconf origins, see the GNU webpage on the topic at http://www.gnu.org/

software/autoconf.

7. See http://savannah.gnu.org/projects/autoconf.

Autotools_02.book Page 6 Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

A Brief Introduction to the GNU Autotools 7

zautoreconf

zautoscan

zautoupdate

zifnames

autoconf

autoconf is a simple Bourne shell script. Its main task is to ensure that the cur-

rent shell contains the functionality necessary to execute the M4 macro proces-

sor. (I’ll discuss Autoconf’s use of M4 in detail in Chapter 3.) The remainder

of the script parses command-line parameters and executes autom4te.

autoreconf

The autoreconf utility executes the configuration tools in the autoconf,

automake, and libtool packages as required by each project. autoreconf

minimizes the amount of regeneration required to address changes in

timestamps, features, and project state. It was written as an attempt to

consolidate existing maintainer-written, script-based utilities that ran all

the required Autotools in the right order. You can think of autoreconf as a

sort of smart Autotools bootstrap utility. If all you have is a configure.ac file,

you can run autoreconf to execute all the tools you need, in the correct

order, so that configure will be properly generated.

autoheader

The autoheader utility generates a C/C++–compatible header file template

from various constructs in configure.ac. This file is usually called config.h.in.

When the end user executes configure, the configuration script generates

config.h from config.h.in. As maintainer, you’ll use autoheader to generate the

template file that you will include in your distribution package. (We’ll examine

autoheader in greater detail in Chapter 3.)

autoscan

The autoscan program generates a default configure.ac file for a new project; it

can also examine an existing Autotools project for flaws and opportunities

for enhancement. (We’ll discuss autoscan in more detail in Chapters 3 and 8.)

autoscan is very useful as a starting point for a project that uses a non-Autotools-

based build system, but it may also be useful for suggesting features that might

enhance an existing Autotools-based project.

autoupdate

The autoupdate utility is used to update configure.ac or the template (.in) files

to match the syntax supported by the current version of the Autotools.

Autotools_02.book Page 7 Tuesday, June 15, 2010 2:38 PM

www.it-ebooks.info

8Chapter 1

ifnames

The ifnames program is a small and generally underused utility that accepts a list

of source file names on the command line and displays a list of C-preprocessor

definitions on the stdout device. This utility was designed to help maintainers

determine what to put into the configure.ac and Makefile.am files to make them

portable. If your project was written with some level of portability in mind,

ifnames can help you determine where those attempts at portability are located

in your source tree and give you the names of potential portability definitions.

autom4te

The autom4te utility is an intelligent caching wrapper for M4 that is used by

most of the other Autotools. The autom4te cache decreases the time successive

tools spend accessing configure.ac constructs by as much as 30 percent.

I won’t spend a lot of time on autom4te (pronounced automate) because

it’s primarily used internally by the Autotools. The only sign that it’s working

is the autom4te.cache directory that will appear in your top-level project direc-

tory after you run autoconf or autoreconf.

Working Together

Of the tools listed above, autoconf and autoheader are the only ones project

maintainers will use directly when generating a configure script, and autoreconf

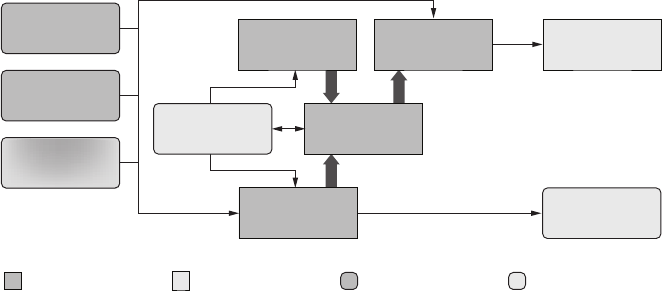

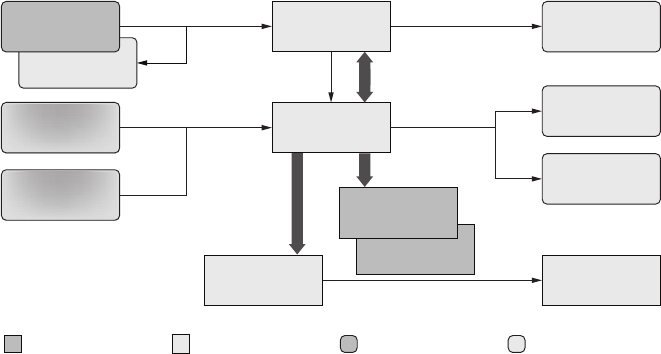

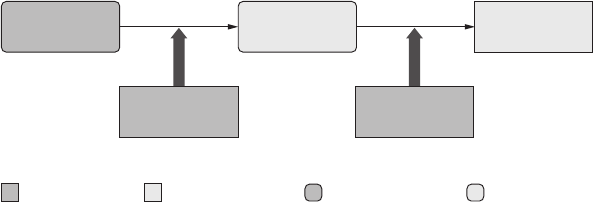

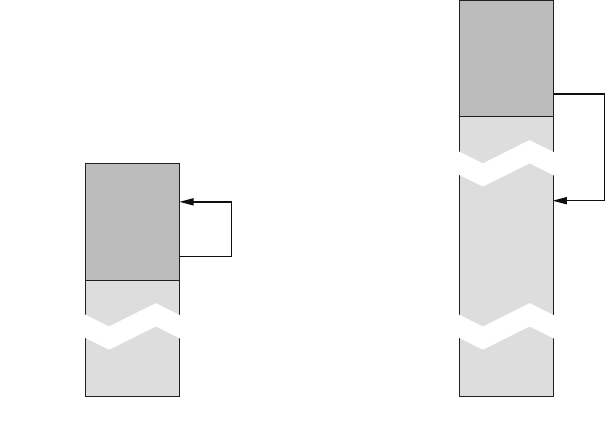

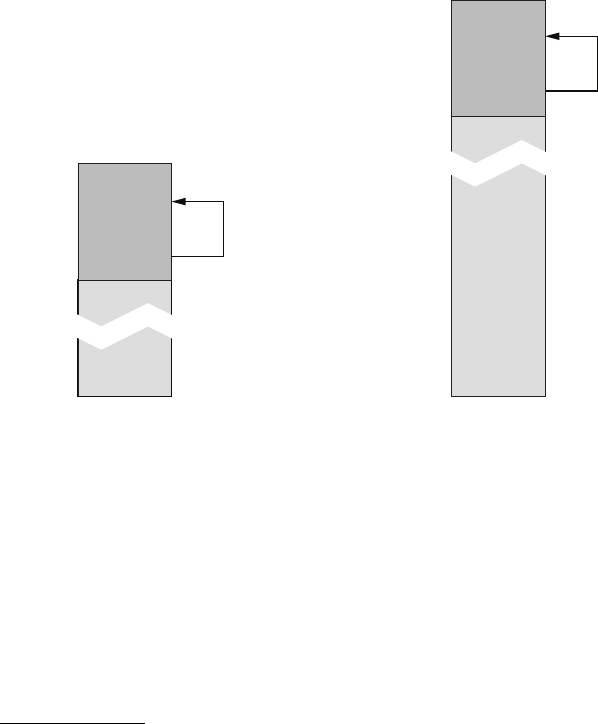

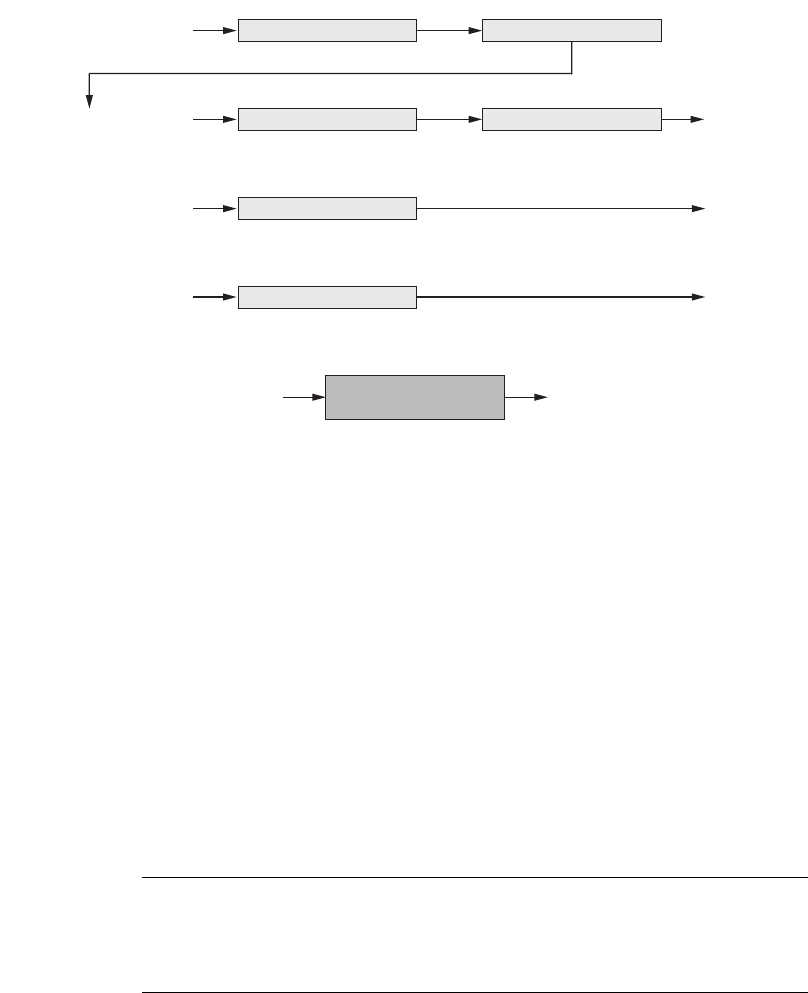

is the only one that the developer needs to directly execute. Figure 1-1

shows the interaction between input files and autoconf and autoheader that

generates the corresponding product files.

Figure 1-1: A data flow diagram for autoconf and autoheader

NOTE I will use the data flow diagram format shown in Figure 1-1 throughout this book.