Azure Strategic Implementation Guide For IT Organizations New To

Azure_Strategic_Implementation_Guide_for_IT_Organizations_New_to_Azure

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 120 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover

- Contents

- Introduction

- Chapter 1:

Microsoft Azure governance

- Cloud envisioning

- Cloud readiness

- Chapter: Architecture

- Chapter 3: Application development and operations

- Chapter 4: Service management

- Conclusion

- About the authors

Azure Strategy and

Implementation Guide

PUBLISHED BY

Microsoft Corporation

One Microsoft Way

Redmond, Washington 98052-6399

Copyright © 2018 by Microsoft Corporation

All rights reserved. No part of the contents of this book may be reproduced or transmitted in any

form or by any means without the written permission of the publisher.

This book is provided “as-is” and expresses the author’s views and opinions. The views, opinions and

information expressed in this book, including URL and other Internet website references, may change

without notice.

Some examples depicted herein are provided for illustration only and are fictitious. No real association

or connection is intended or should be inferred.

Microsoft and the trademarks listed at http://www.microsoft.com on the “Trademarks” webpage are

trademarks of the Microsoft group of companies. All other marks are property of their respective

owners.

Authors: Joachim Hafner, Simon Schwingel, Tyler Ayers, and Rolf McLaughlin (MASUCH). Introduction

by Britt Johnston.

Editorial Production: Dianne Russell, Octal Publishing, Inc.

Copyeditor: Bob Russell, Octal Publishing, Inc.

ii Contents

Contents

Introduction ................................................................................................................................................ iv

Chapter 1: Microsoft Azure governance ................................................................................................. 1

Cloud envisioning .................................................................................................................................................................... 2

Cloud readiness ........................................................................................................................................................................ 3

Cloud readiness framework ............................................................................................................................................ 3

Organizational cloud readiness .................................................................................................................................. 10

Chapter 2: Architecture ............................................................................................................................ 13

Security ..................................................................................................................................................................................... 16

Securing the modern enterprise ................................................................................................................................ 17

Identity versus network security perimeter ........................................................................................................... 19

Data protection...................................................................................................................................................................... 22

Data classification ............................................................................................................................................................ 22

Threat management........................................................................................................................................................ 25

Identity ...................................................................................................................................................................................... 28

Azure AD .............................................................................................................................................................................. 28

Domain topology ............................................................................................................................................................. 32

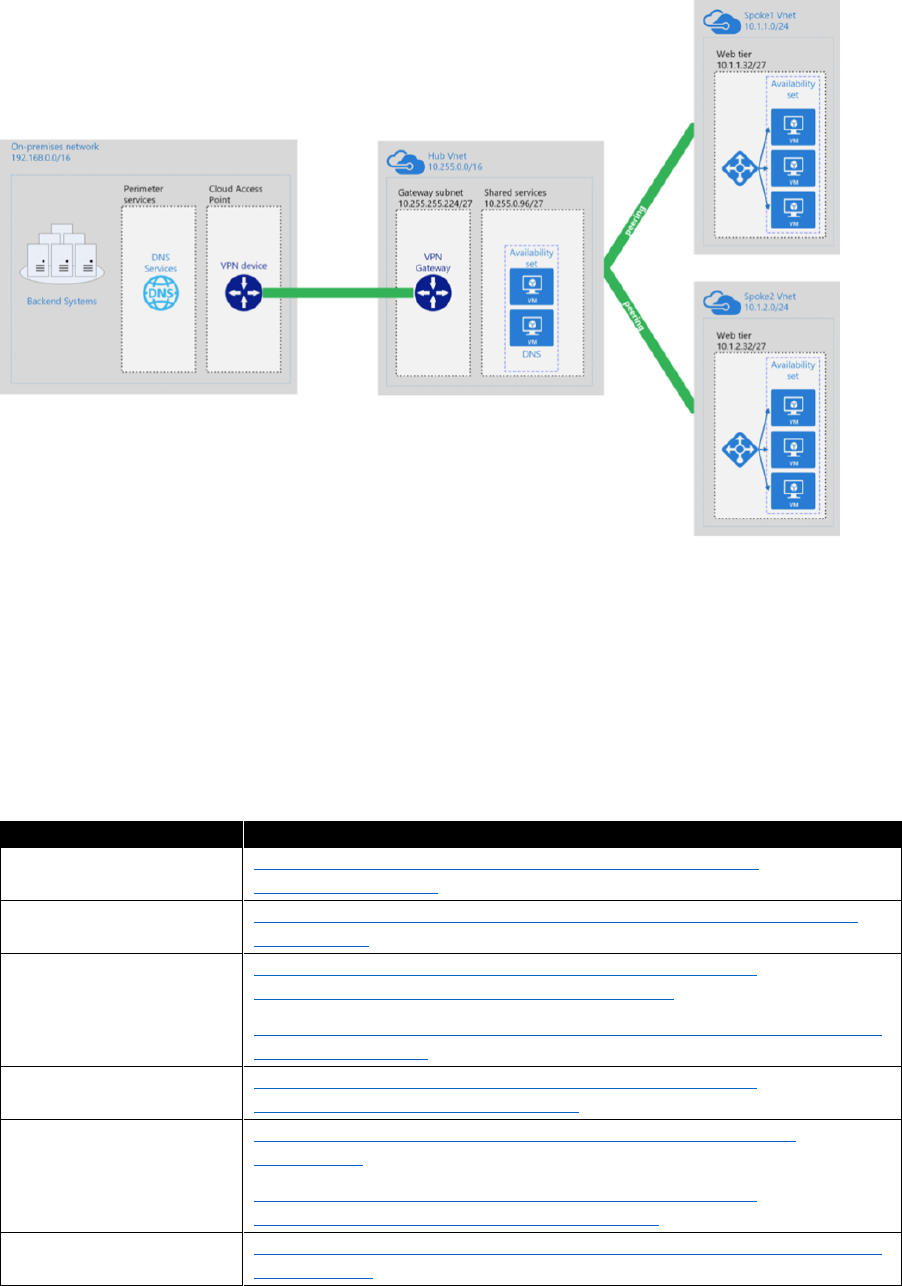

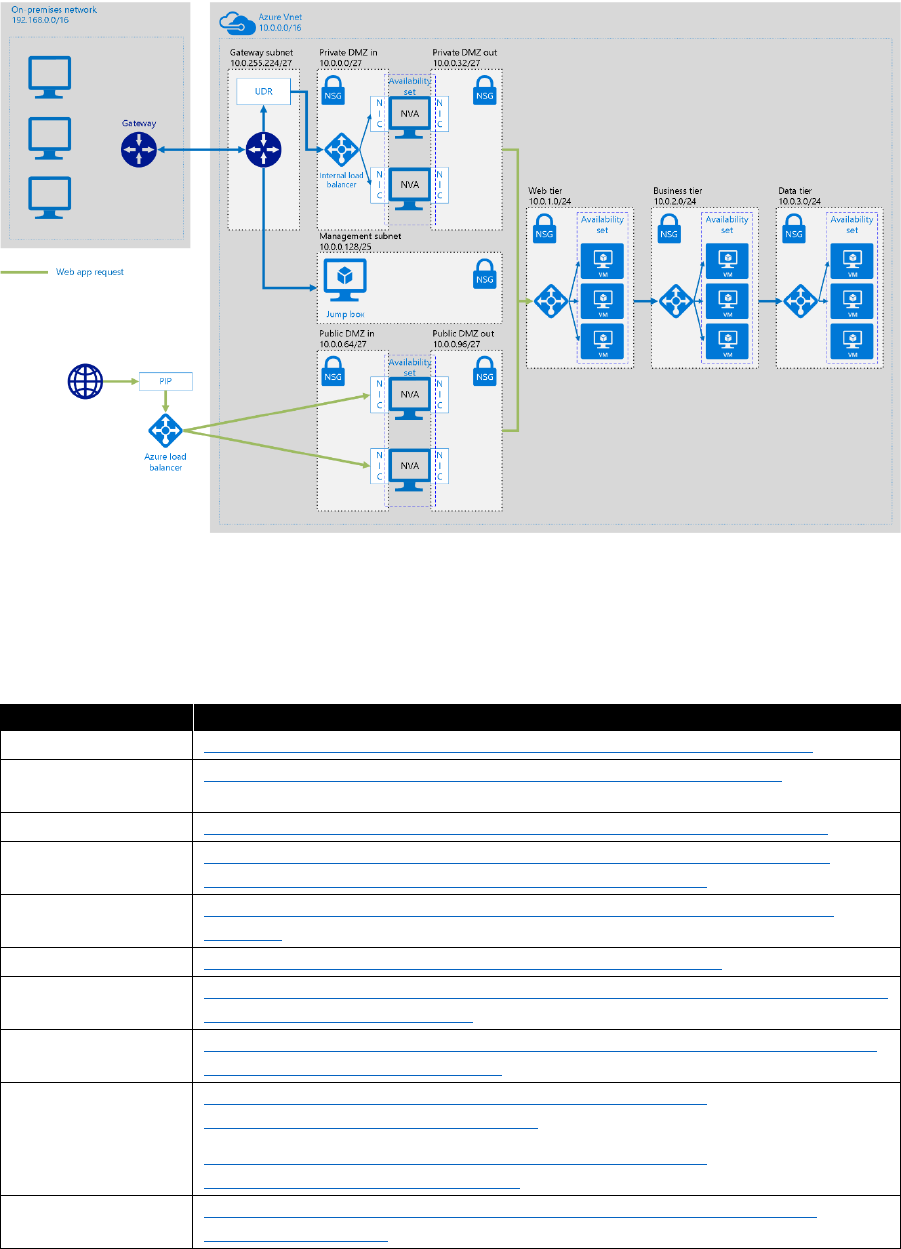

Connectivity ............................................................................................................................................................................ 35

Hybrid networking ........................................................................................................................................................... 35

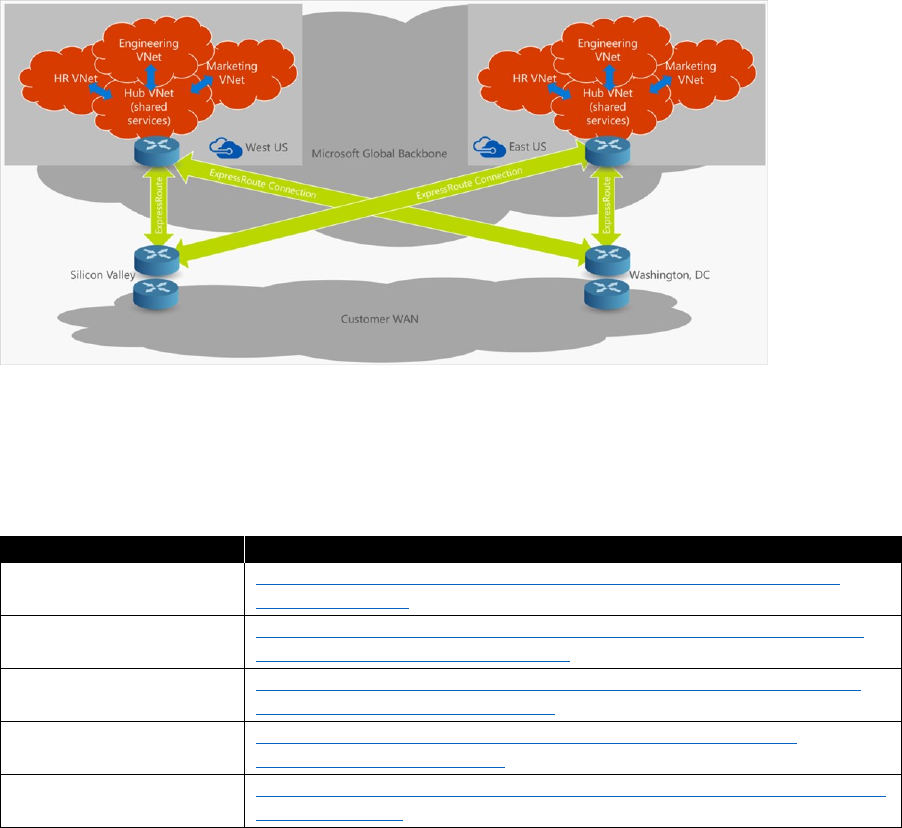

Global network design ................................................................................................................................................... 38

Network security .............................................................................................................................................................. 39

Remote access ................................................................................................................................................................... 42

Application design ............................................................................................................................................................... 42

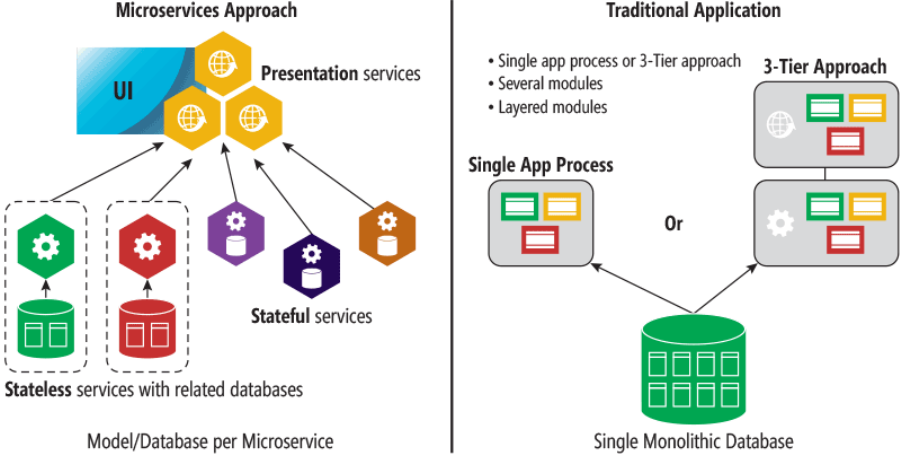

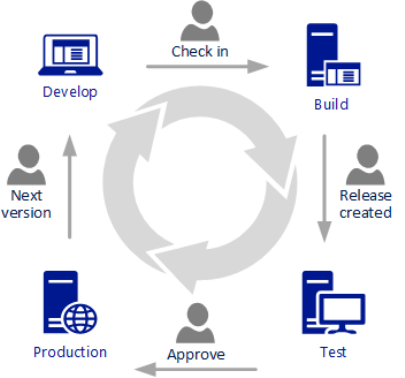

Microservices versus monolithic applications ...................................................................................................... 43

Cloud patterns ................................................................................................................................................................... 44

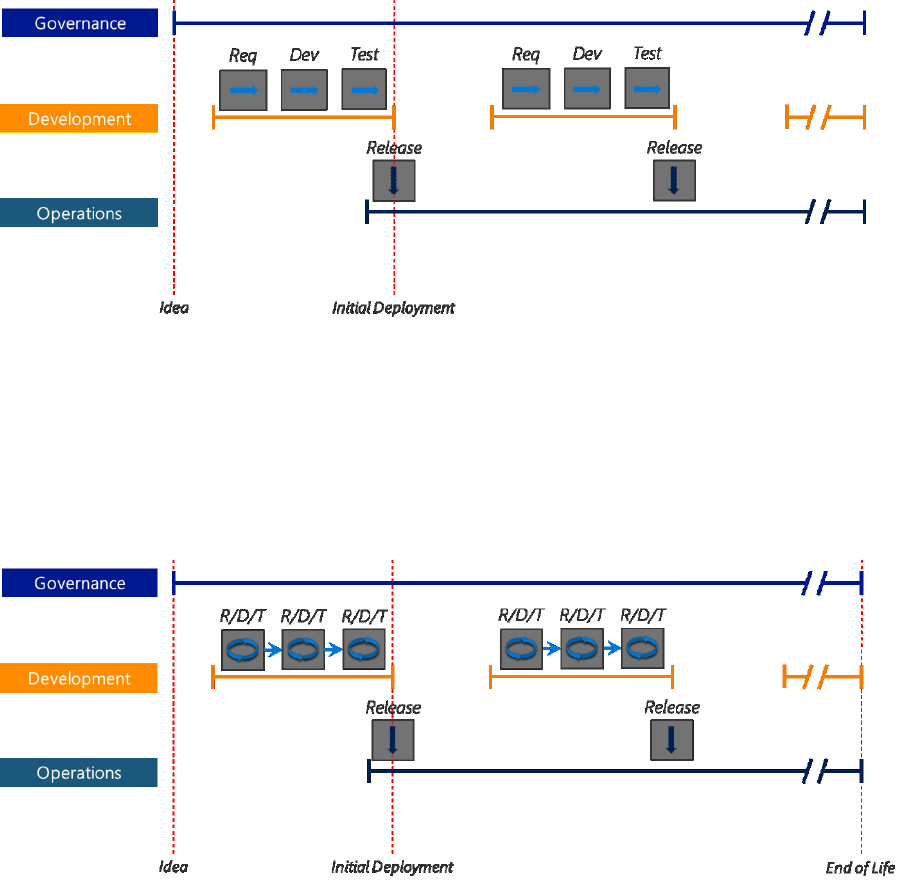

Chapter 3: Application development and operations ......................................................................... 50

Business application development ................................................................................................................................ 51

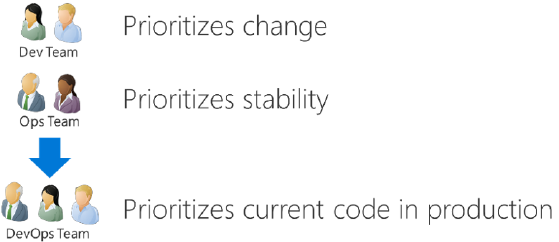

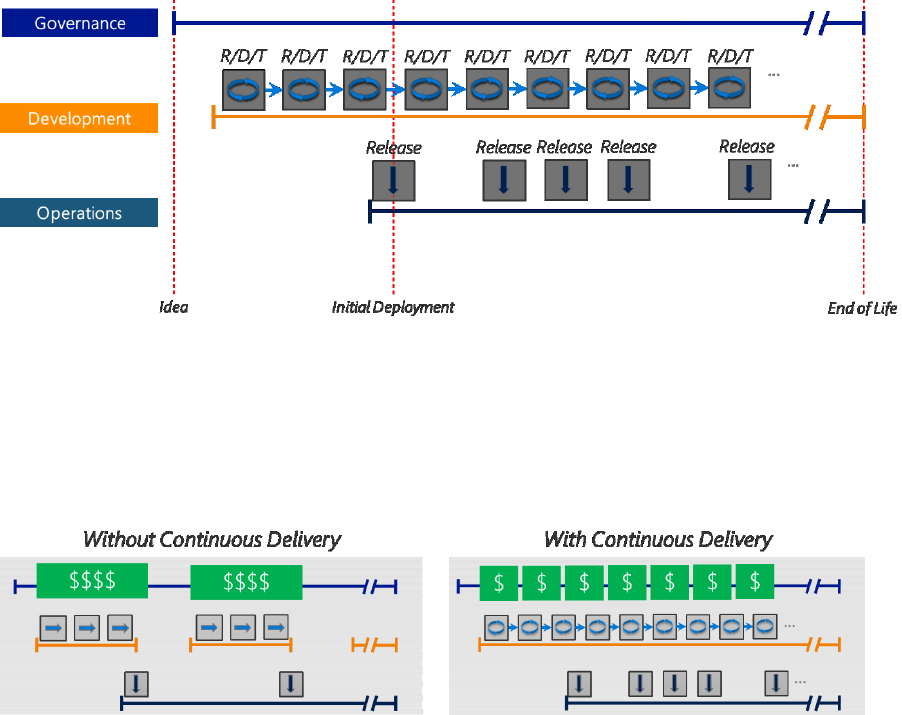

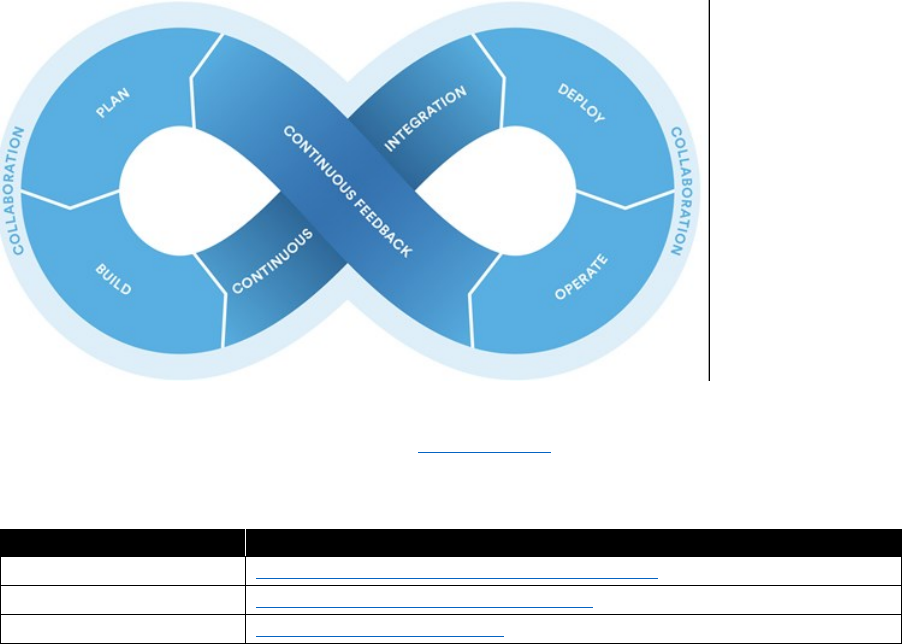

Waterfall to Agile to DevOps ...................................................................................................................................... 52

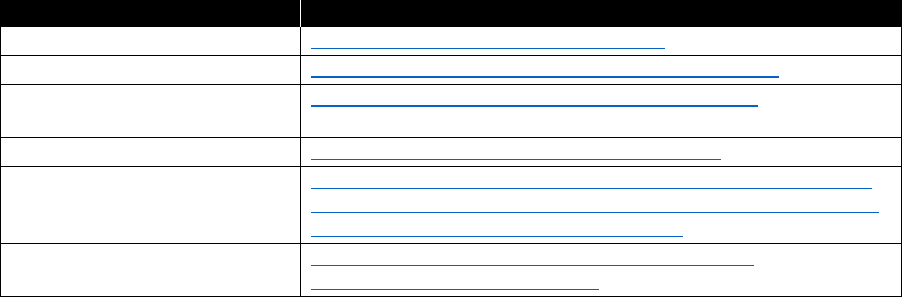

DevOps ................................................................................................................................................................................. 54

iii Contents

Moving to DevOps .......................................................................................................................................................... 63

Application operations ....................................................................................................................................................... 64

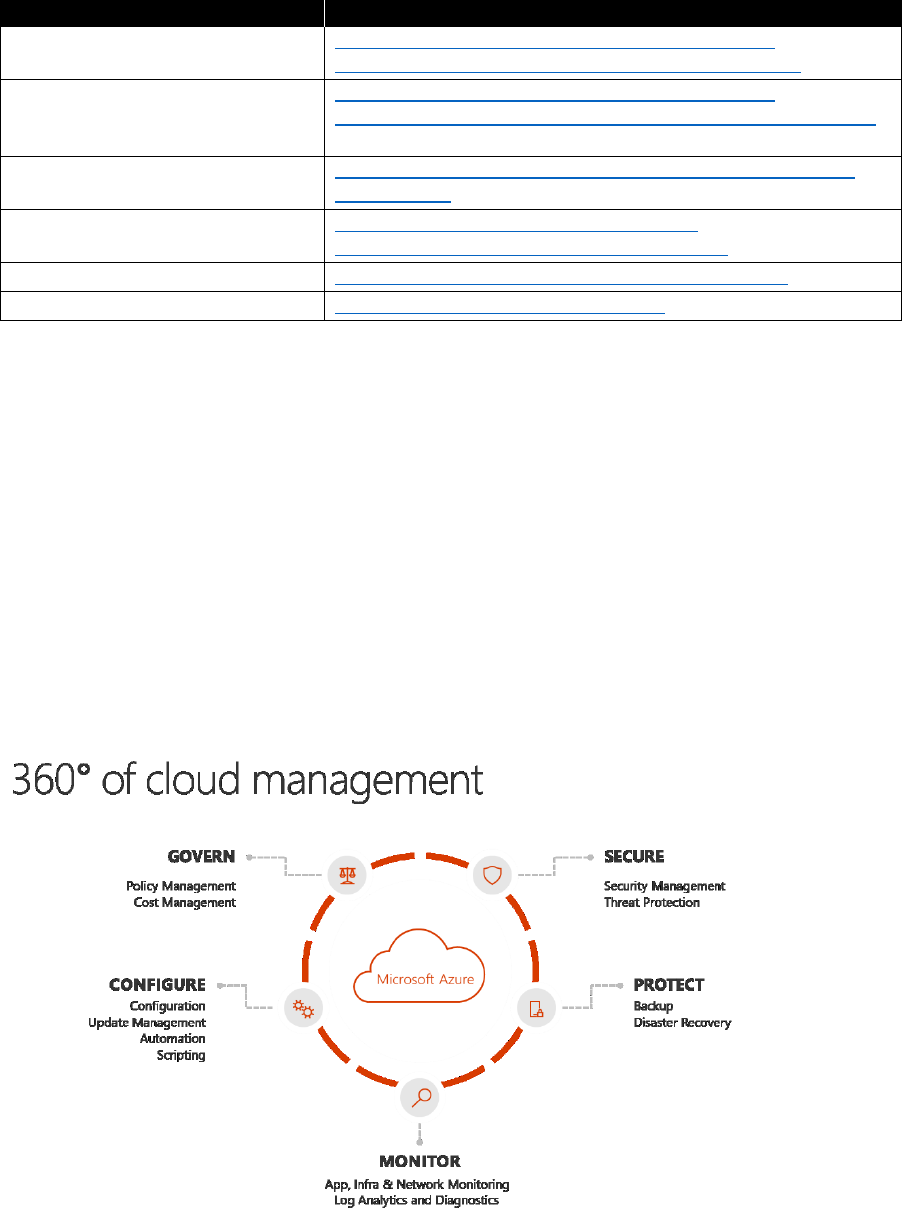

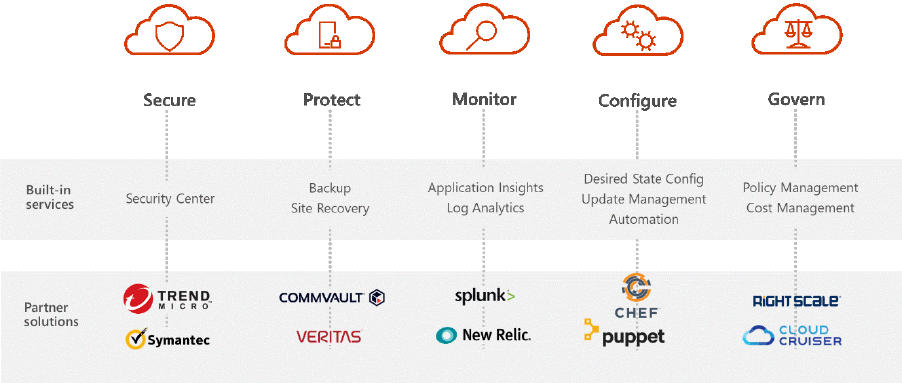

Secure ................................................................................................................................................................................... 65

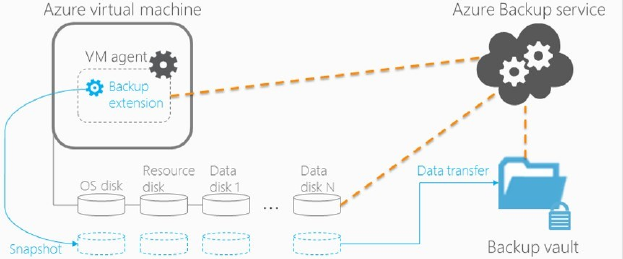

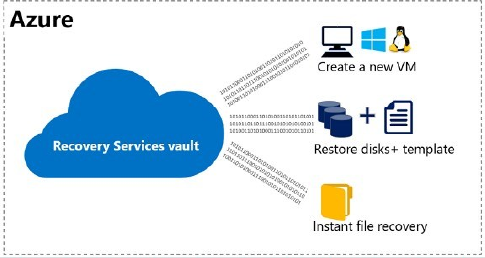

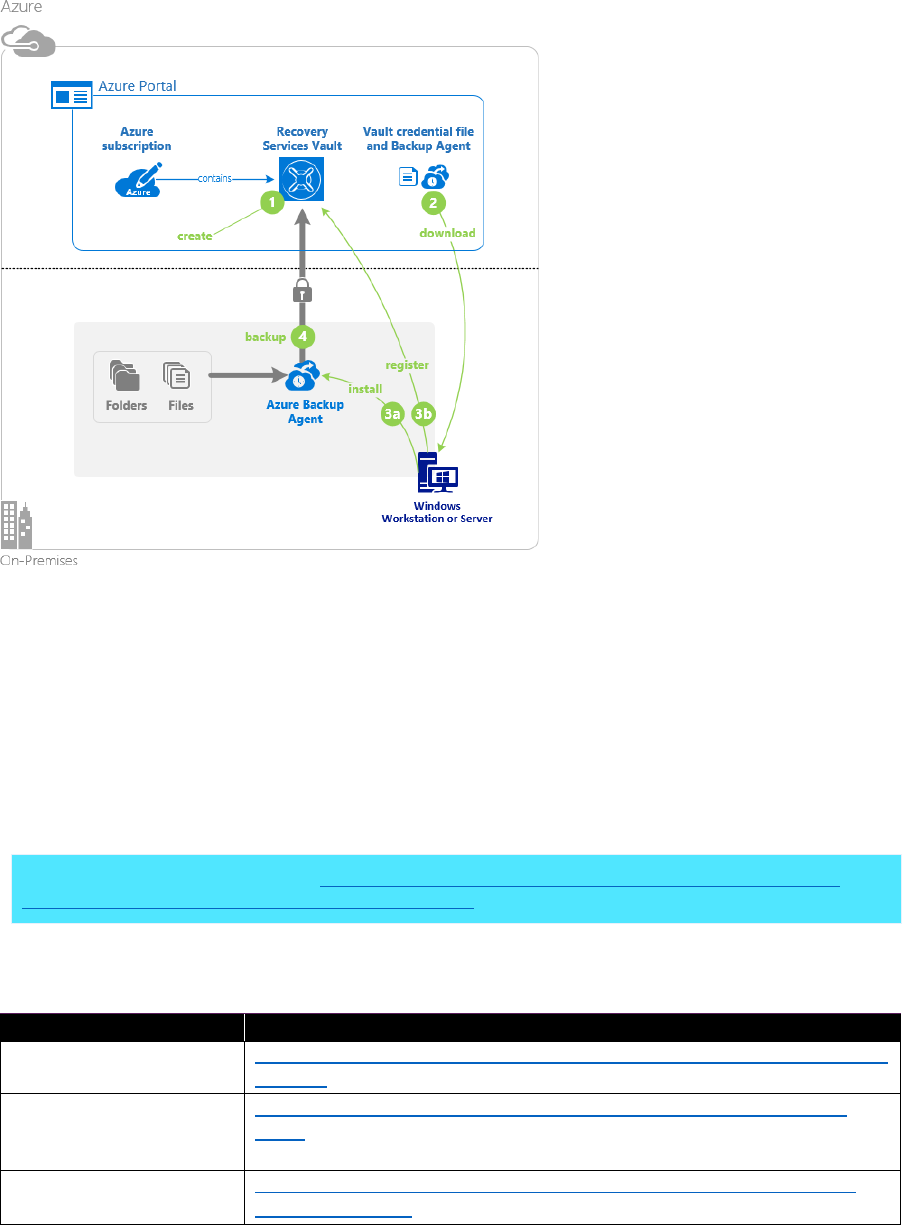

Protect .................................................................................................................................................................................. 66

Monitor ................................................................................................................................................................................ 77

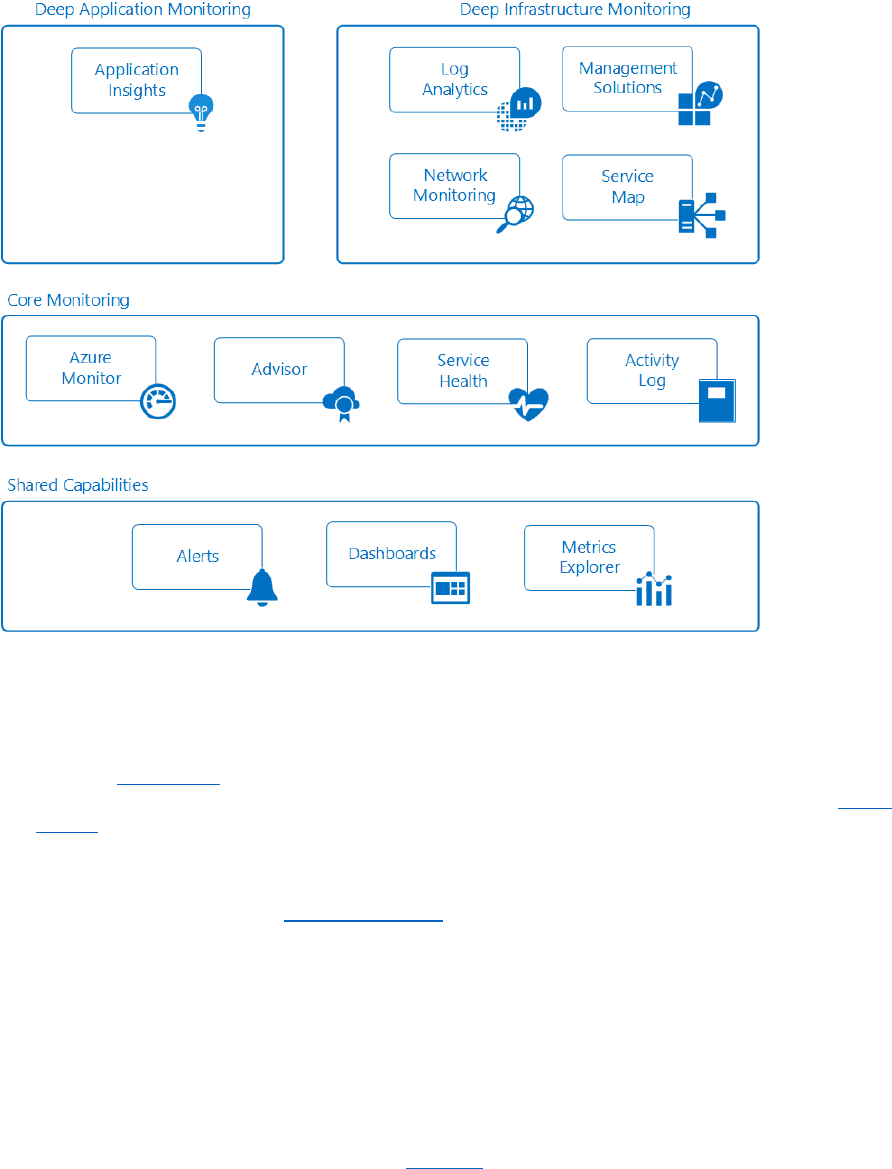

Deep monitoring services ............................................................................................................................................. 80

Integrate with IT Service Management ................................................................................................................... 85

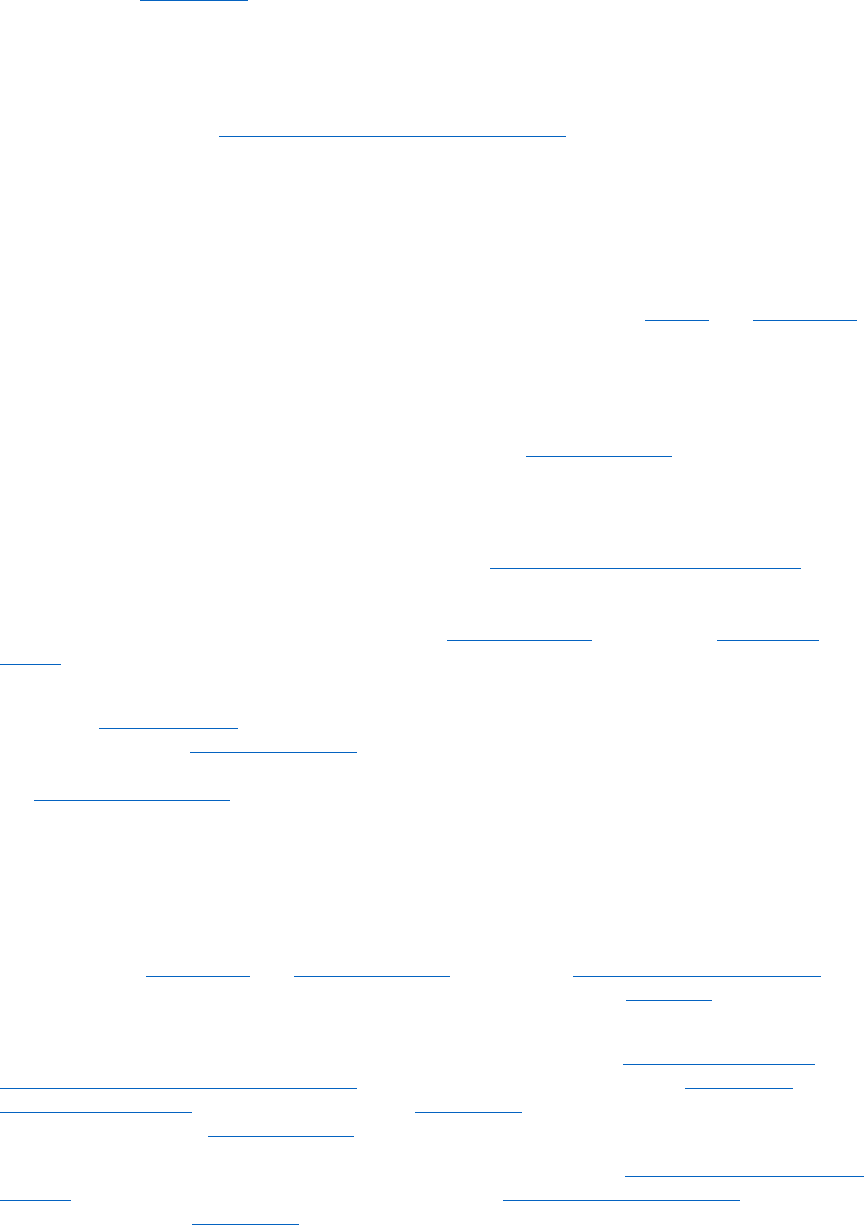

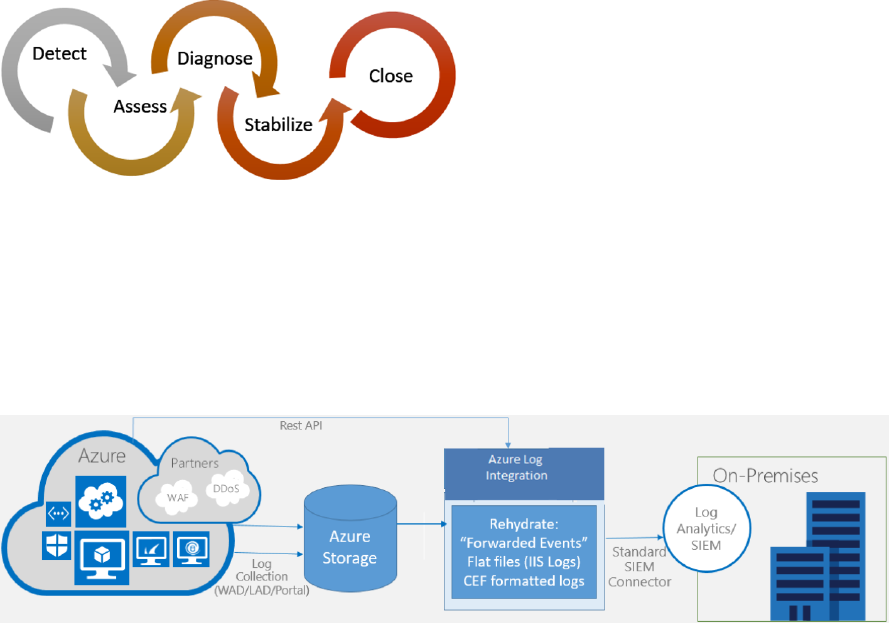

Integrate with Security Information and Event Management systems ...................................................... 85

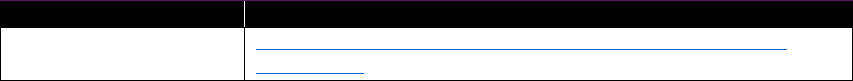

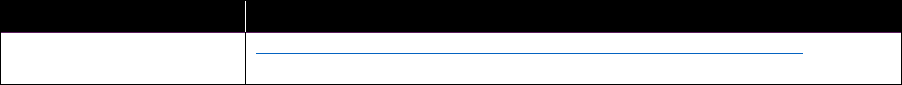

Managed services for standard and business applications ................................................................................. 90

Offering managed services .......................................................................................................................................... 91

Consuming managed services .................................................................................................................................... 92

Provisioning managed services .................................................................................................................................. 93

Metering consumption .................................................................................................................................................. 93

Billing and price prediction .......................................................................................................................................... 94

Chapter 4: Service management ............................................................................................................ 95

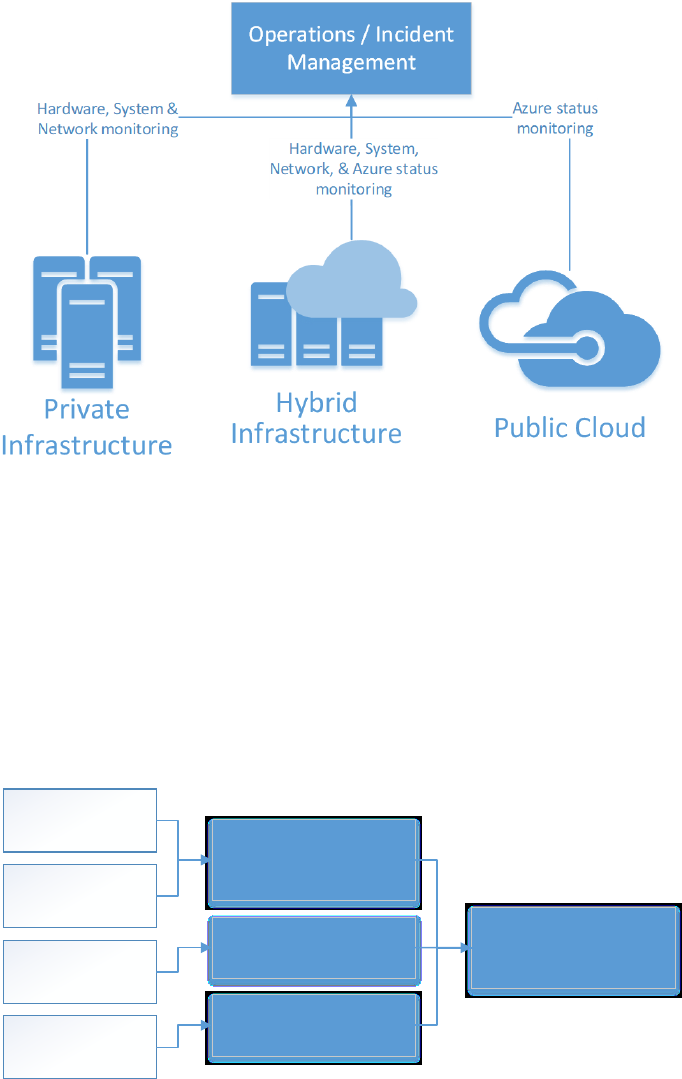

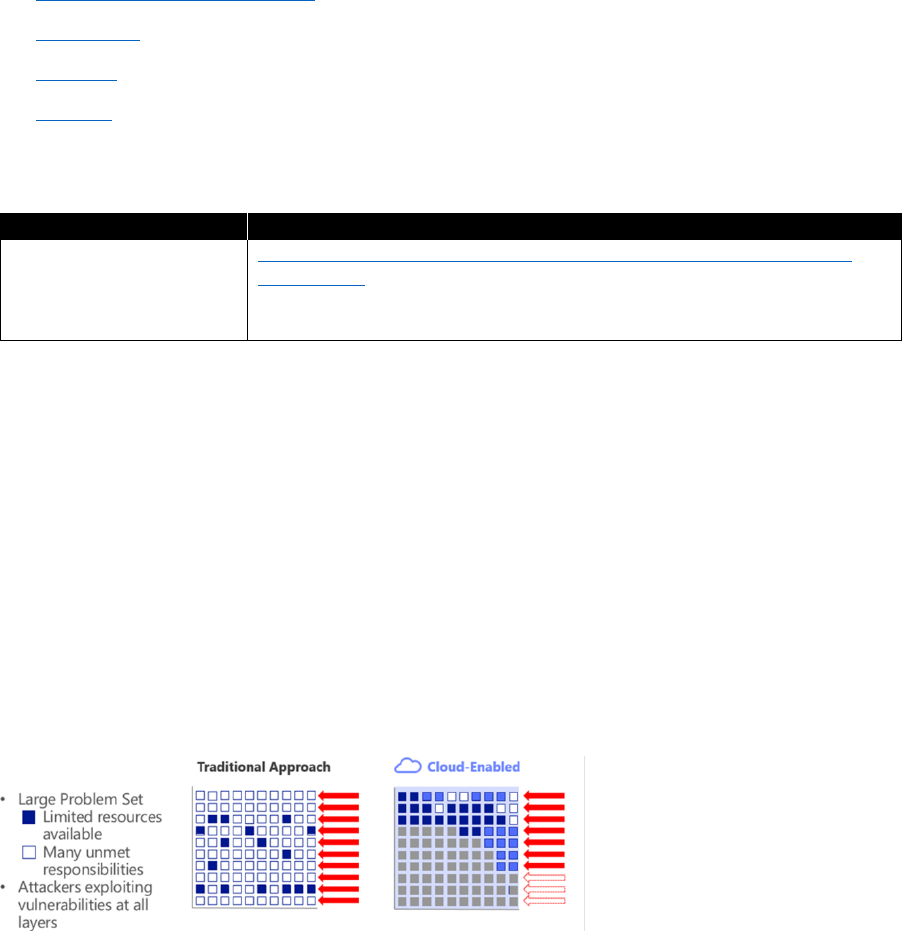

Incident management ......................................................................................................................................................... 96

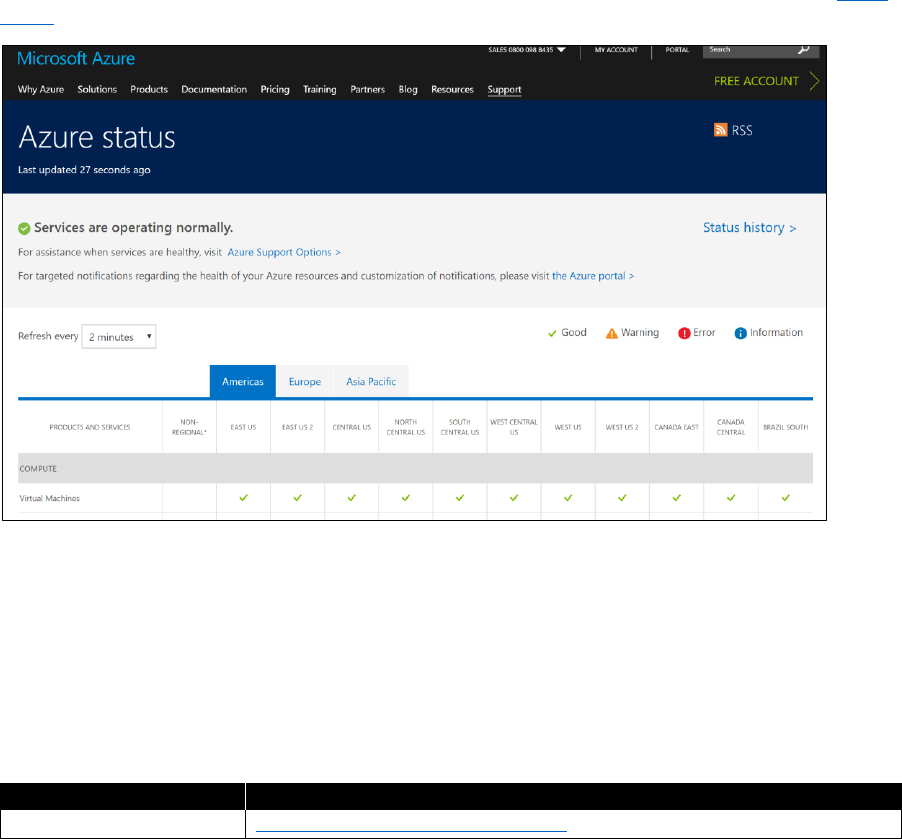

Azure status ........................................................................................................................................................................ 98

Service health .................................................................................................................................................................... 98

IT Service Management integration ....................................................................................................................... 100

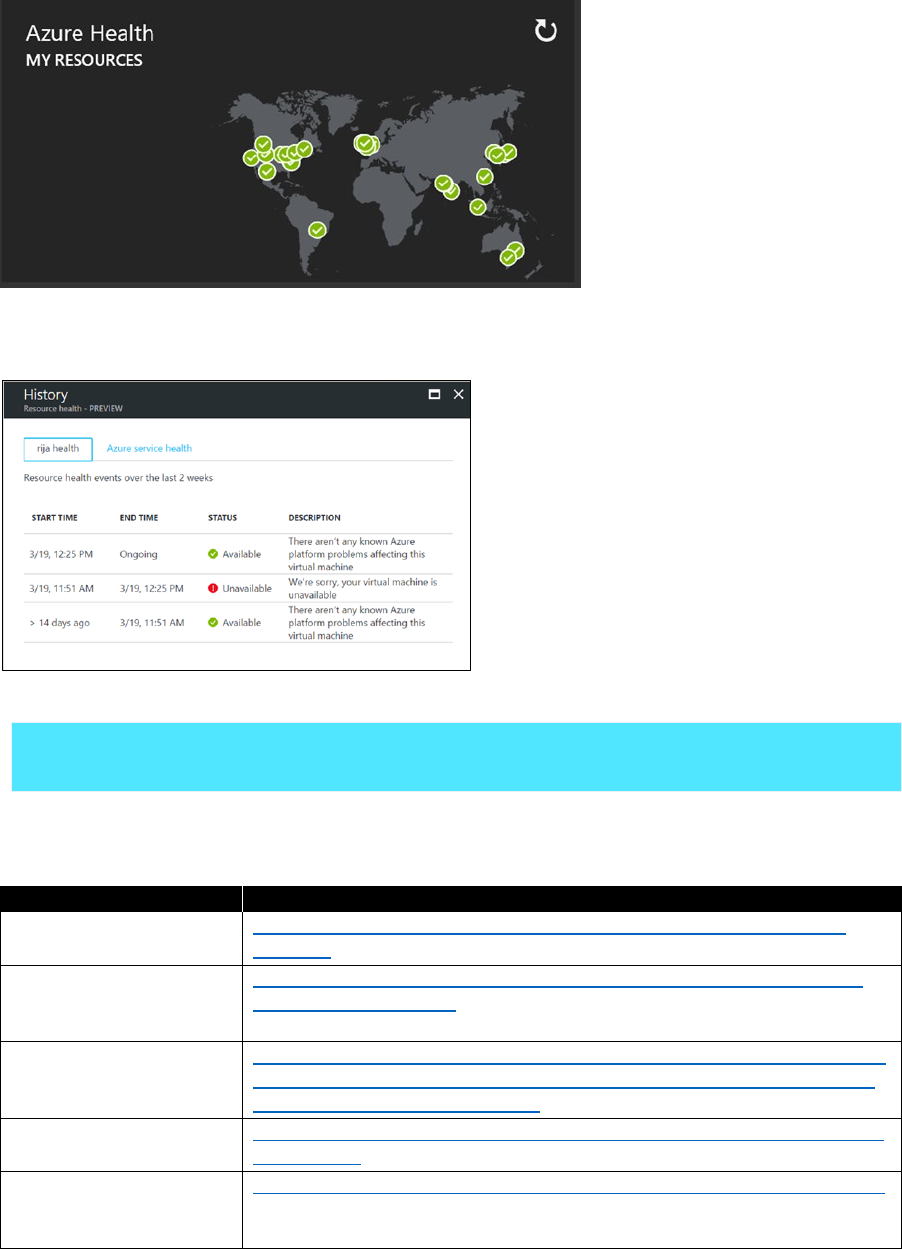

Security incidents ........................................................................................................................................................... 100

Microsoft support .......................................................................................................................................................... 102

Problem management ...................................................................................................................................................... 103

Change management ........................................................................................................................................................ 104

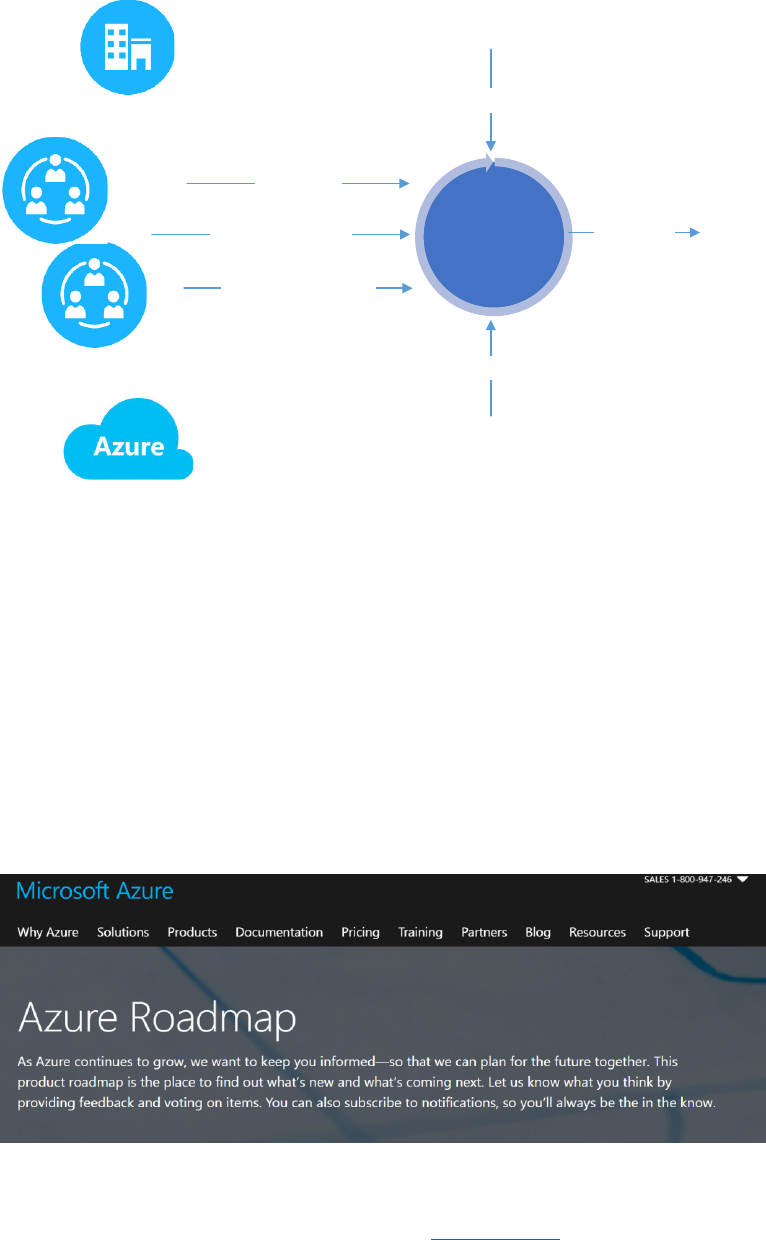

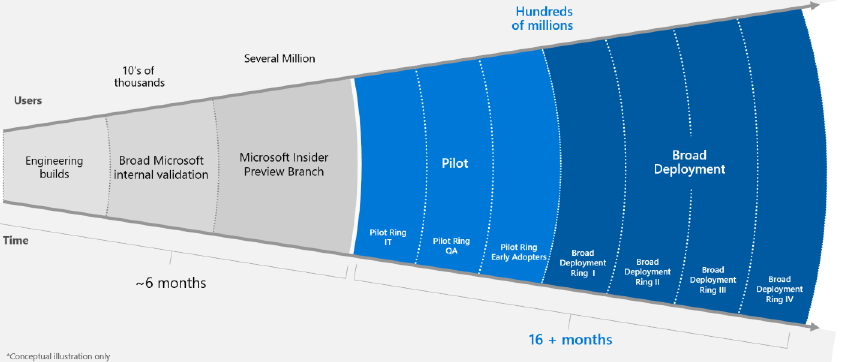

Azure platform change ................................................................................................................................................ 105

Capacity management ...................................................................................................................................................... 107

Asset and configuration management ....................................................................................................................... 108

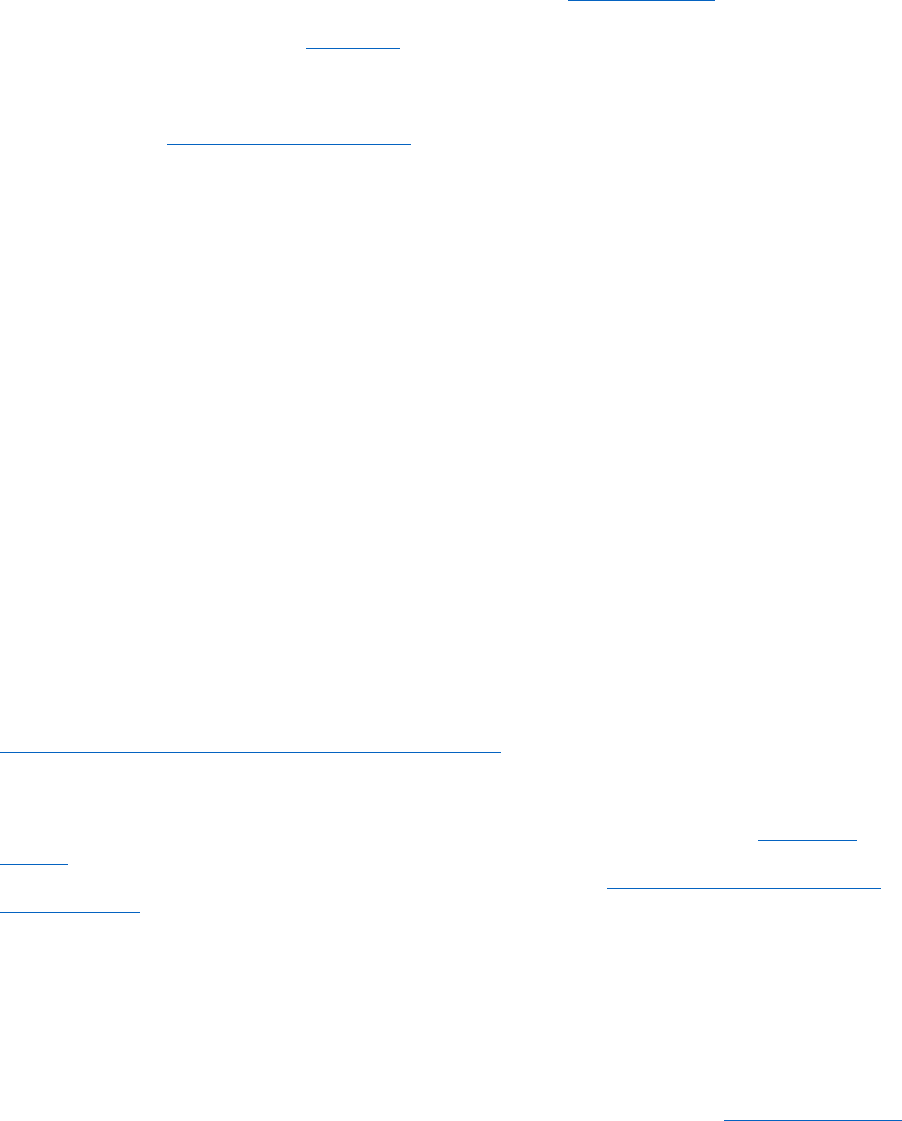

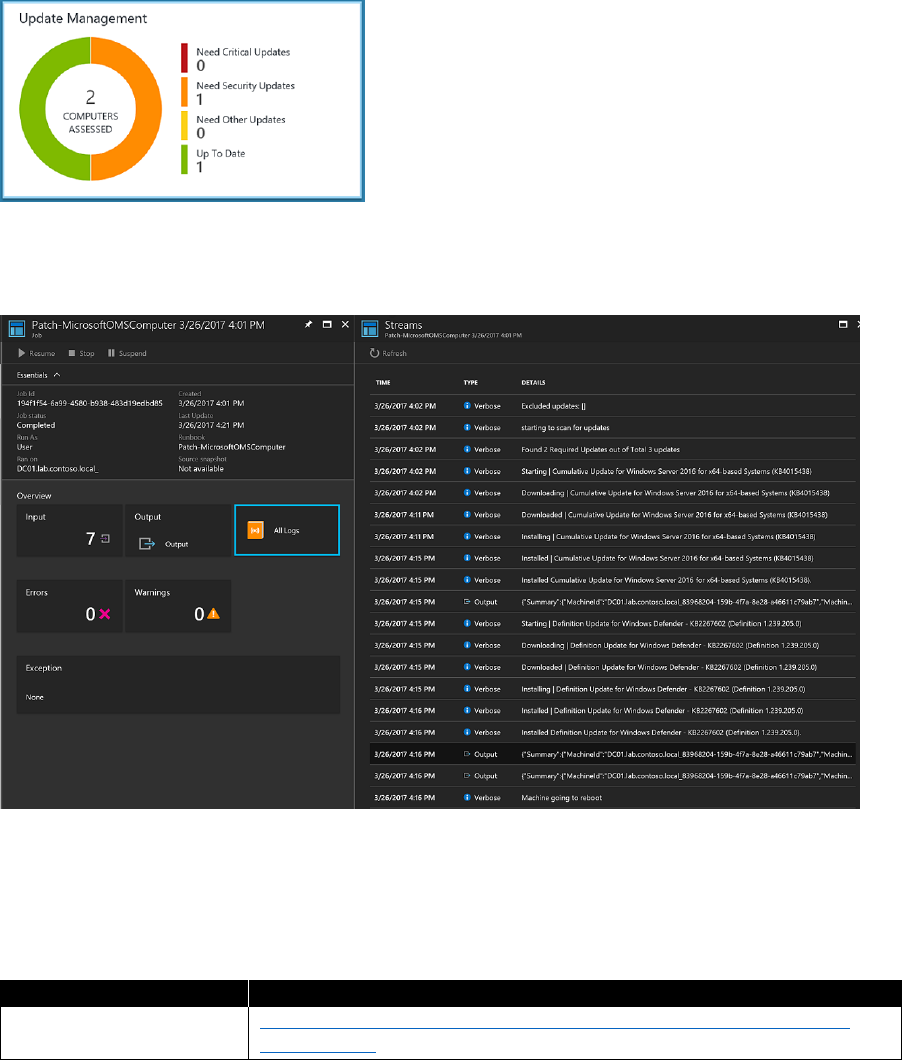

Update and patch management ................................................................................................................................... 109

Azure platform ................................................................................................................................................................ 109

Software as a service ..................................................................................................................................................... 110

Platform as a service ..................................................................................................................................................... 110

Infrastructure as a service ........................................................................................................................................... 110

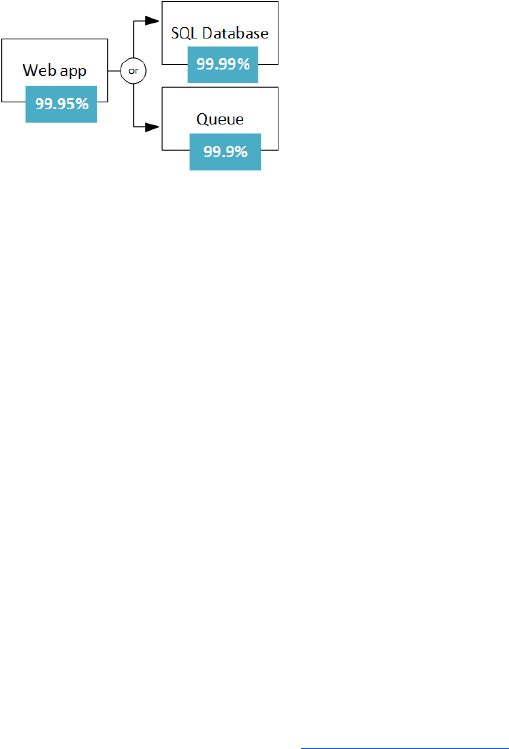

Service-level management .............................................................................................................................................. 112

Chapter 5: Conclusion ............................................................................................................................ 114

About the authors .................................................................................................................................. 115

iv Introduction

Introduction

Each organization has a unique journey to the cloud based on its own starting point, its history, its

culture, and its goals. This document is designed to meet you wherever you are on that journey and

help you build or reinforce a solid foundation to support your cloud application development and

operations, service management, and governance.

Microsoft has been on this journey for the past decade, and over the past years we have learned

important lessons by developing our own internal and customer-facing systems. We’ve also been

fortunate to work with thousands of customers on their own journeys. This book is designed to share

and distill those experiences into proactive guidance. You don’t need to follow these recommendations

to the letter, but you ignore them at your peril. Our experience has shown that a careful approach to

these topics will speed you along on your organization’s journey and avoid well-understood pitfalls.

For the past several years, we have seen consistent explosive growth as new organizations take on the

challenges associated with their individual journeys, and we have seen a shift from the adventurous

technician to the aggressive business transformer who engage with us. The pattern is clearly

emerging, that deep engagement in cloud computing often leads to digital transformation that

drives fundamental changes in how organizations operate.

In the early stages of cloud adoption, many IT organizations feel challenged, and even threatened,

at the prospect of the journey ahead, but as those organizations engage, they undergo their own

evolution, learning new skills, evolving their roles, and in the end becoming more agile and efficient

technology providers. The result often turns what is perceived as a cost of business into a competitive

advantage that makes it possible to redefine long-believed limitations. In many cases, what emerges

are new business opportunities.

An important concept covered in this book is a strategy for identifying and moving specific workloads

based on their actual value to the business. Some emerge in a new form infused with cloud design

principals that were otherwise not available in the past. Others receive targeted improvements to

extend their lifetimes. Still others move as-is, using the “lift and shift” approach that requires minimal

change. Because of the unique capabilities of the Microsoft Cloud and the Microsoft Azure platform,

workloads that must remain on-premises because of latency or compliance requirements can fully

participate in the journey because of the ability for an organization to run Azure services on-premises

using Azure Stack.

This book is designed for decision makers to gain a high-level overview of topics as well as by IT

professionals responsible for broad implementation. Regardless of where you are personally focused

in infrastructure, data or application arena, there are important concepts and learnings here for you.

As you read, we hope you will gain insights into recommended general architecture to take advantage

of cloud design principles, the evolution possible in application development to DevOps, approaches

to service management, and overall governance. We are on an exciting and challenging journey

together, and we hope this document will speed you along your way!

—Britt Johnston

CTO Intelligent Cloud, Microsoft

1 CHAPTER 1 | Microsoft Azure governance

C H A PT E R

1

Microsoft Azure

governance

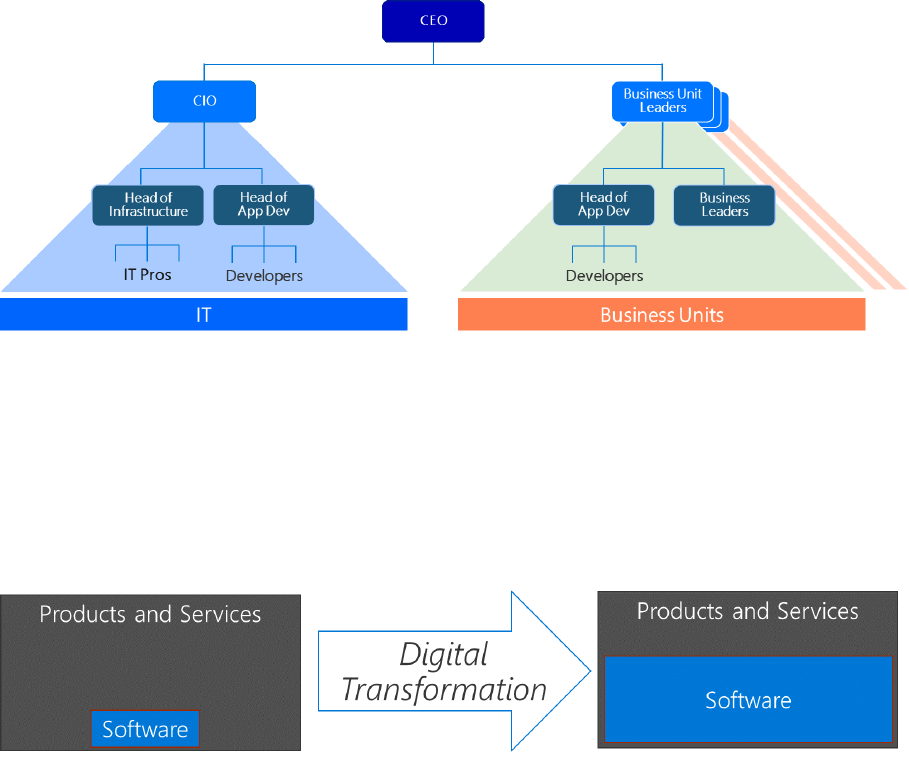

When it comes to governance a variety of interpretations exist. For

Microsoft, Azure governance has three components (Figure 1-1): Design

of Governance, Execution of Governance, and Review of Governance. In

this chapter, we take a look at each of these components.

Figure 1-1: Azure governance

The first component is Design of Governance. This component derives from the customer’s cloud

vision. It comes together with the customer’s constraints, such as regulatory obligations or privacy-

related data that needs to be processed. This is the why do we do things.

The second component is Execution of Governance. This component contains all of the measures

to fulfill the required needs, like reporting, encryption, policies, and so on, to ensure that the defined

component is followed by measures that can be implemented and controlled. This is the how we

do things.

Finally, to ensure that all of the measures are fulfilling the intended purpose, a Review of Governance is

needed to verify that the implementation follows the design.

2 CHAPTER 1 | Microsoft Azure governance

Cloud envisioning

Having digital transformation in mind is already the first step toward envisioning how you can use

cloud technology. The next step is to break it down into actionable steps within your enterprise.

For additional reading on the topic of cloud strategy and envisioning, we recommend reading

Enterprise Cloud Strategy, 2nd Edition by Eduardo Kassner and Barry Briggs. You can find it at

https://azure.microsoft.com/resources/enterprise-cloud-strategy/. The book explains and elaborates

on actionable ideas, several of which are briefly introduced in the section that follows.

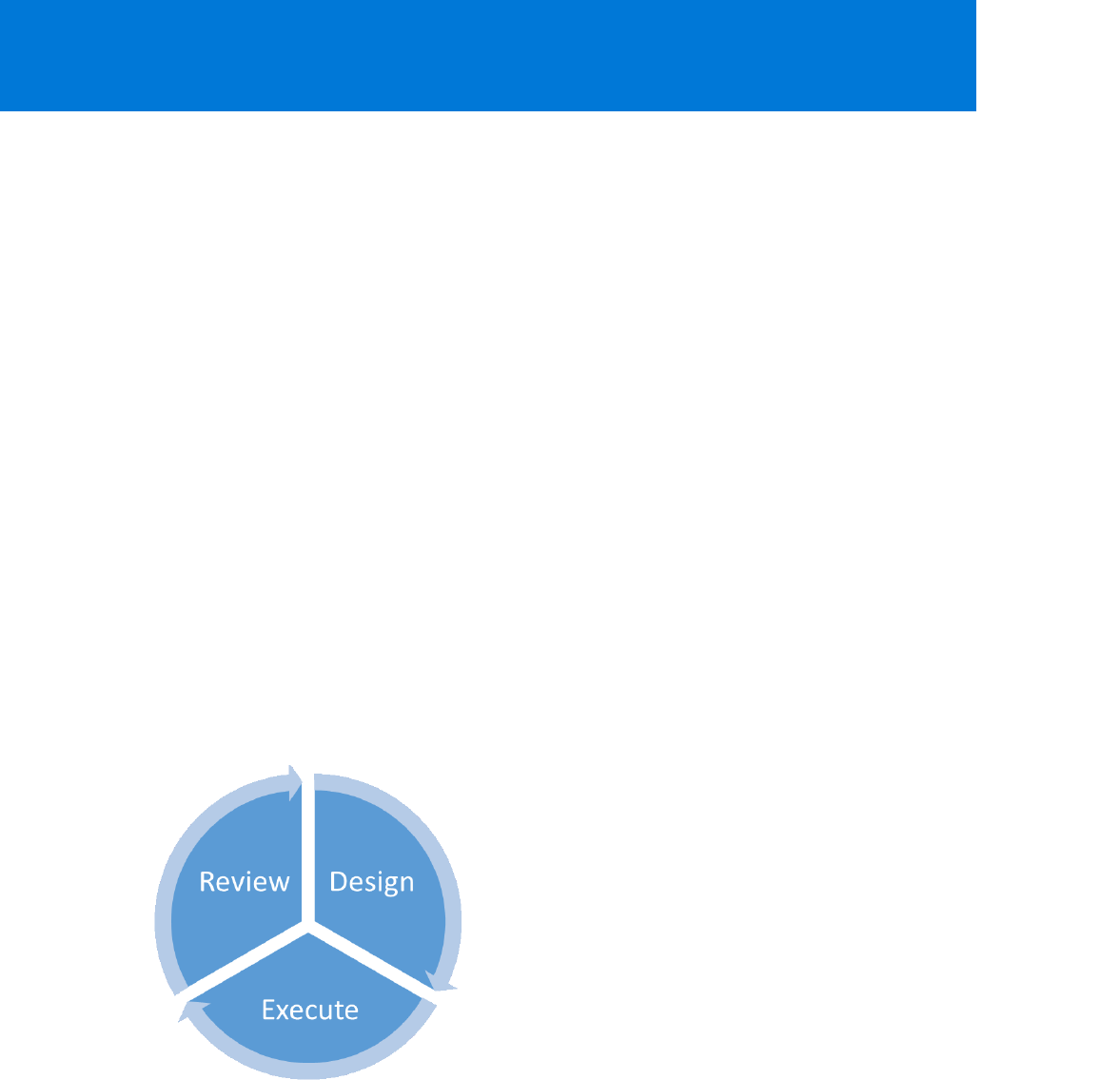

Figure 1-2 and the following list present the main pillars and how to understand them:

• Engage your customers. To deliver personalized, rich, connected experiences on journeys that

your customers choose.

• Transform your products. To keep up with your fast-moving customers, efficiently

collaborating to anticipate and meet their demands.

• Empower your employees. To increase the flow of information across your entire business

operations, better manage your resources, and keep your business processes synchronized across

all boundaries.

• Optimize your operations. To expand the reach of your business using digital channels,

anticipate customer needs, understand how your products are used, and quickly develop and

improve products and services.

Figure 1-2: Digital transformation

Each of these pillars are connected to one another; some customers work on all pillars at the same

time, whereas others are working on only one pillar at a time. This depends on the strategic decisions,

capabilities, and capacities each customer can assign to the process and the defined timeline.

The top management task is now to name the action areas, give them priorities and the needed

resources, and articulate the desired outcomes. This should be considered the company’s North Star

for orientation, for how to get there. Some enterprises prefer the “top-down” way of defining this,

whereas others engage with their workforce for the same purpose.

Examples of cloud visions are, “We want to have 50 percent of our compute power moved to the

cloud by 2020,” or, “All our new products will be completely cloud-based on DevOps methodologies

starting this fiscal year.”

If there is a shared vision that guides the company as a whole through the digital transformation, the

mission is accomplished.

3 CHAPTER 1 | Microsoft Azure governance

Cloud readiness

After the cloud is envisioned as a means for the company’s further evolution, the next steps need

to be prepared and implemented. Here, envisioning and a clear picture can help you to keep track

of your actions and let you prioritize to achieve quick wins while keeping the focus on the digital

transformation. Cloud readiness is the next phase. But, to be certain, cloud readiness applies to more

than a traditional waterfall project with its highly structured work breakdown structure (WBS). An Agile

Scrum approach can be very successful, too, if the cloud vision and the desired outcome are well

defined.

In the sections that follow, we describe areas we’ve identified in which change might be needed for an

enterprise to handle cloud services effectively. The list is not exhaustive, and you should consider it as

a starting point. If you identify additional areas in your organization that might need to undergo

change, you are already in the driver’s seat for your digital transformation.

Chapter 2 and Chapter 3 focus on developing a readiness framework and the organizational readiness

to support a digital transformation. Following that, this e-book concentrates on more technical

aspects, like Azure architecture, application development, and operations, we close with the service

management of Azure and an outlook.

Cloud readiness framework

A readiness framework can help you to embed your cloud activities into your existing procedures,

operational tasks, and responsibilities to make sure that you, as the enterprise, stay in control of your

cloud journey. For some companies, the creation of a readiness framework is a huge task because

their existing structures are challenged in a way that is very demanding. But that is the basic principle

of the digital transformation.

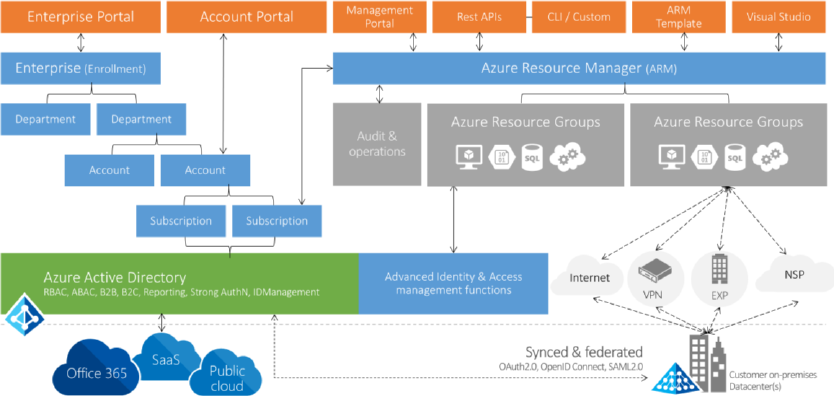

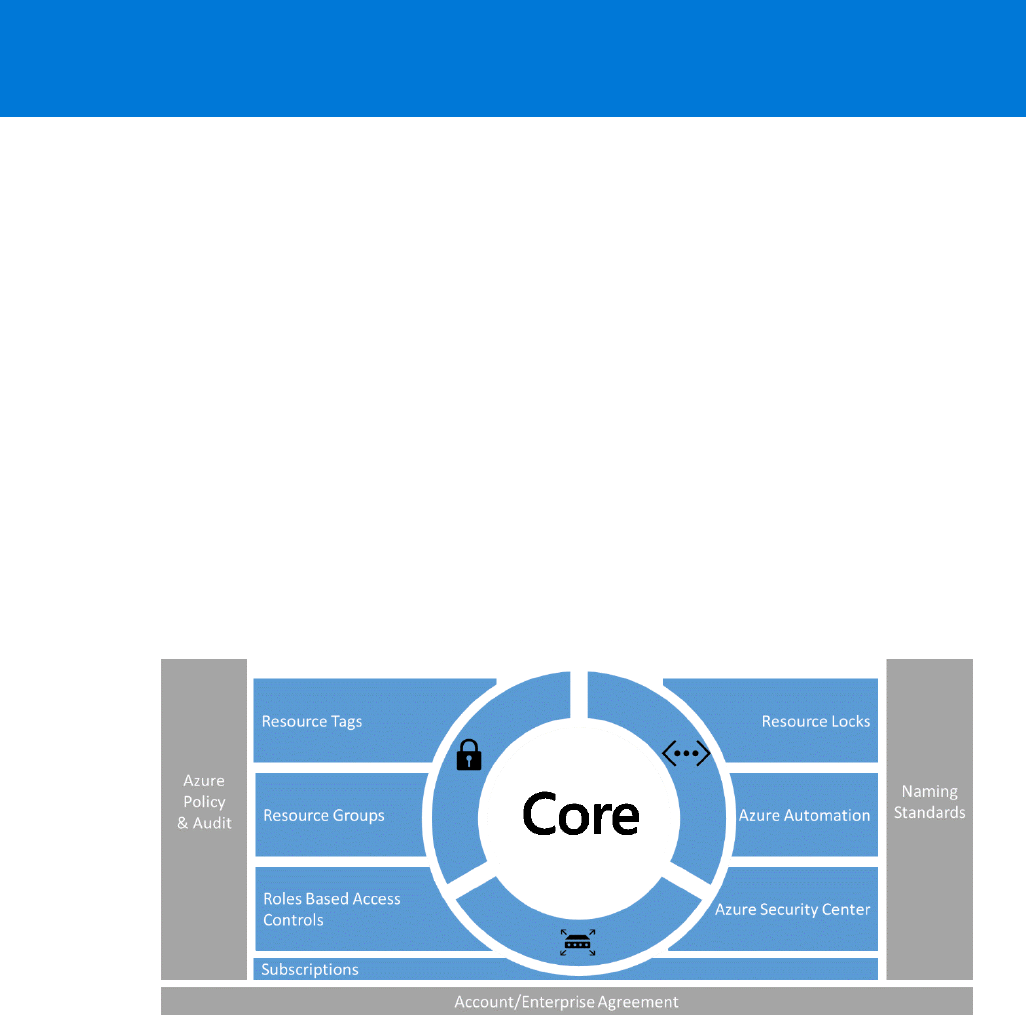

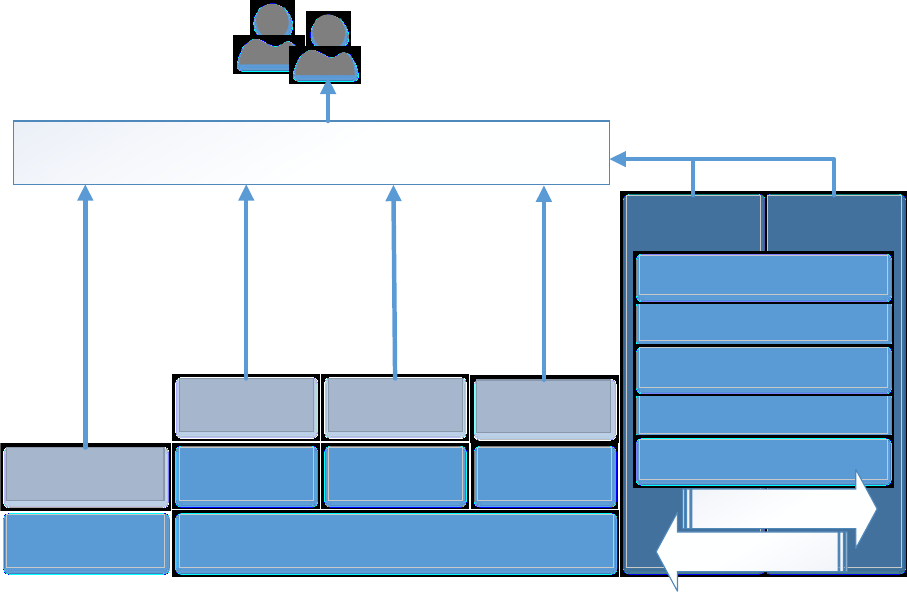

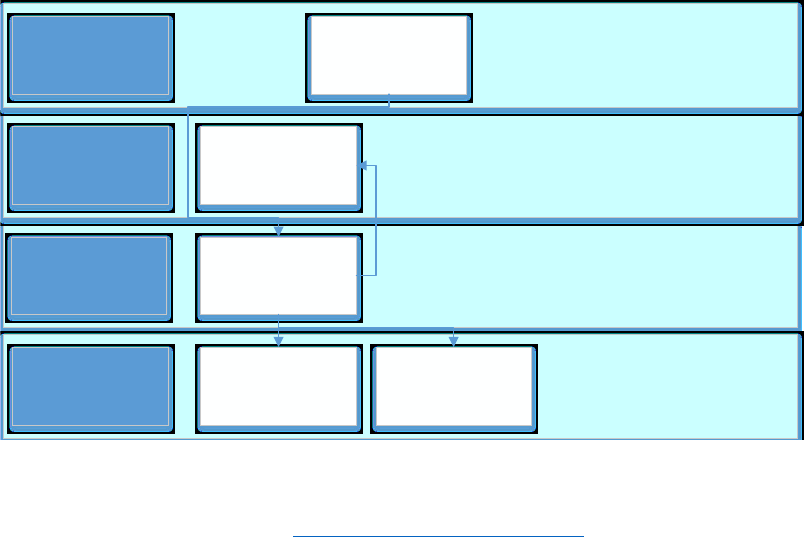

Figure 1-3 is intended to serve as guidance for the next chapters and to give you a high-level

overview of the areas that are relevant to Azure and its governance.

Figure 1-3: Cloud readiness framework

The orange blocks in the upper left, Enterprise Portal and Account Portal, with the components

Enterprise, Department, Account, and Subscription below them show the dependencies from the

enterprise contractual level down to the more technical element of Azure subscriptions. This part is

mainly focusing on the contract, the purchase, and the billing of Azure. But already we can see that

there is a strong dependency on Azure Active Directory (Azure AD).

4 CHAPTER 1 | Microsoft Azure governance

Azure AD is the identity repository for other Microsoft Cloud services like Microsoft Office 365 and

Microsoft Dynamics 365. Many enterprises choose to synchronize all or a major part of their on-

premises Active Directory with Azure AD. Microsoft’s recommended technology for this is the Azure

Active Directory Connector, which is free of charge. In this way, companies remain in control of their

corporate identities. A combination with federation services is possible and very often used as a

means of stronger control.

With this level of control, the resources and different ways to interact with Azure are securely

accessible through the common interface of the Azure Resource Management layer.

The Azure resource groups now are the main structure where all of your resources—for example,

virtual machines (VMs), Azure storage, and platform services like Azure Machine Learning and so on—

are grouped together.

Access to these resources is possible over the internet through secure channels like Virtual Private

Networks (VPNs) or Azure ExpressRoute, as long as a dedicated Multiprotocol Label Switching (MPLS)

connection with high-bandwidth options and Service-Level Agreements (SLAs) are in place.

Development operations model cloud services

One part of the framework is an operations model that is fit for the purpose of cloud services. The

crucial point for many customers is the shift away from an oftentimes years-long, outsourcing model

with a huge amount of infrastructure components to an Agile model with a blend of infrastructure as

a service (IaaS), platform as a service (PaaS), and software as a service (SaaS). To be clear, a cloud

provider is worlds apart from an outsourcing provider, but we have seen customers that needed to

evolve to the new way of interaction and defining the responsibilities to operate their new services in

the cloud to be successful.

Customers that have successfully gone that way interacted early with their outsourcing providers and

elaborated on new models of a managed cloud service that they could offer instead of the

outsourcing model.

Chapter 3 provides more information regarding DevOps, operations, and other related topics.

Cost and order management

To achieve a level of cost transparency and to be able to assign certain cost alerts and limits, the

adopting companies must integrate the Azure monetary model into their processes. We cannot totally

describe the requirements here, and they can change in future versions of Azure. Sample

requirements are Azure Usage and Azure Rate Cards. These are supported through the Azure Billing

API and Azure Cost Management. Azure provides a special billing API that you can use to build your

own solution for billing. Typical scenarios include the following:

• Azure spends during the month

• Set up alerts

• Predict bill

• Preconsumption cost analysis

• What-if analysis

Azure Cost Management—powered by Cloudyn, which was acquired by Microsoft in July 2017—

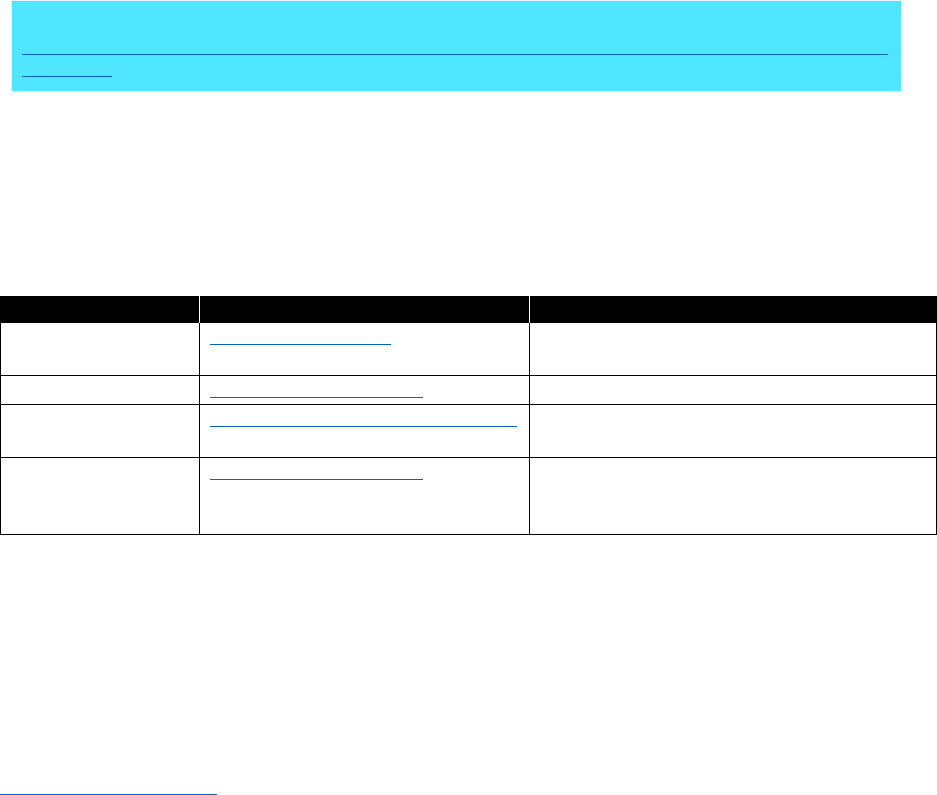

focuses on three main areas of the customer’s cloud business. Table 1-1 lists these areas and their

features.

5 CHAPTER 1 | Microsoft Azure governance

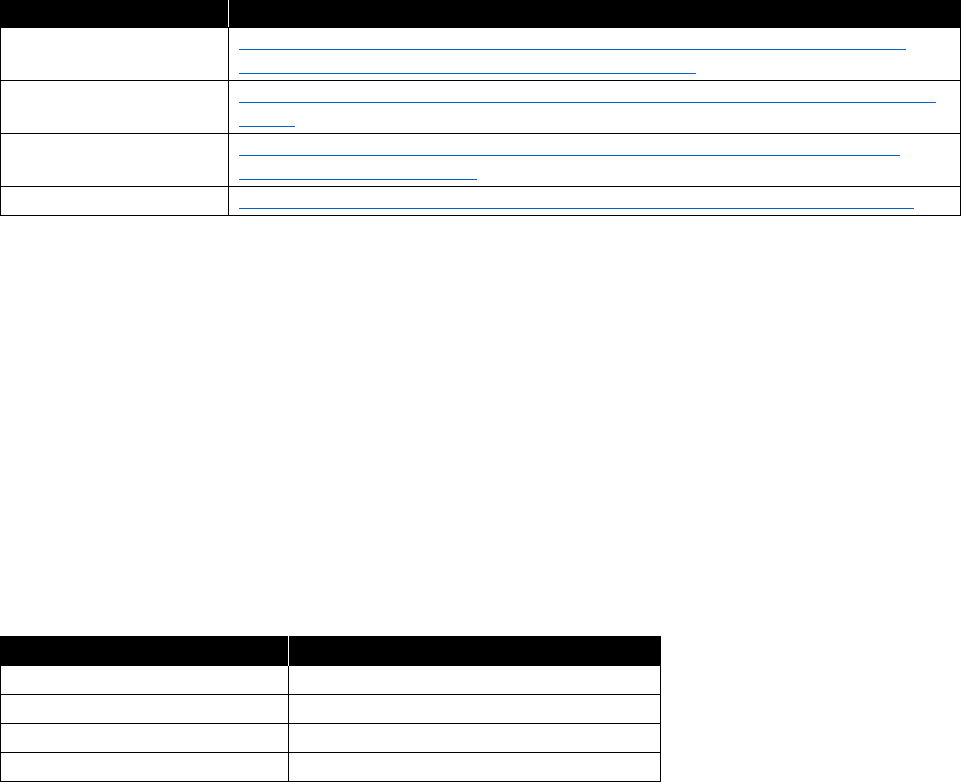

Table 1-1: Azure Cost Management features

Focus area

Features

Gain real-time visibility to

the Azure cloud

environment

Keep track of upfront compute commitments and fees compared with

actual consumption

Reconcile prepay commitments with billing payments

Verify Enterprise Agreement (EA) discounts with actual bills

Stay on top of expiring resources and agreements

Empower enterprise-wide

cloud accountability

Facilitate accurate cost allocation and chargeback across your

enterprise entities including subscriptions, accounts, departments and

cost centers

Implement your own cost allocation method—blended/average/

normalized rates,

RI (Reserved Instance)

autonomous rates, or any

other policy of your choice

Assure RI autonomy—assign zero costs to RI owners and add On-

Demand costs to the departments that used external/borrowed RIs

Track Azure Resource Manager groups’ tags for simplified cost

allocation

Drive Azure cost

management and

optimization

Monitor your VMs’ performance-to-price ratio and receive actionable

recommendations to maximize usage

Calculate your most cost-effective upfront monetary and usage

commitment

Release unused Reserved IP addresses of stopped instances

Dispose unattached block-blob storage volumes

Apply changes directly through the Azure REST API

Another approach can be to use a third-party provider solution like CloudCruiser.

Procurement (Provider to Azure customer)

Another area that will change with cloud adoption is the company’s procurement. Ordering cloud

services is very different from ordering boxes of software or buying blocks of licenses. It begins with

adding licenses as needed; negotiating the EA; and understanding the subscription model of Azure,

Office 365, and other cloud services from Microsoft.

Therefore, the procurement department needs to be a first-class citizen of the cloud-ready world.

It begins by making the procurement team aware of the changes in the products and the way in which

they are purchased. You must modify existing processes, which are based on buying boxed software

and assigning it to cost centers, to purchasing and maintaining one or many Azure subscriptions with

a possible dynamic cost value per month assigned to projects or cost centers. Especially the as-yet-

determined amount of money that needs to be allocated to the project or cost center are the

challenges for every customer.

Most enterprise customers are already acquainted with the contractual construct of a Microsoft

Enterprise Agreement (EA). Additionally, Azure offers the Pay-As-You-Go model. This model includes

no commitment, and you pay only for the services that you actually consume. Payment is handled via

credit card or debit card. Because this is not controllable from a technical perspective, most customers

prohibit using company credit cards for cloud services. Also, expensing cloud services is very often

prohibited by company internal regulations. Your Microsoft sales representative can help you to find

the appropriate option for your situation.

6 CHAPTER 1 | Microsoft Azure governance

Order (Internal customer of Azure customer)

Some customers purchase Microsoft Cloud services like Azure and Office 365 via a central group or

department and then charge individual departments for services consumed. To carry out this type of

purchasing, the customer needs to develop and implement the needed infrastructure and

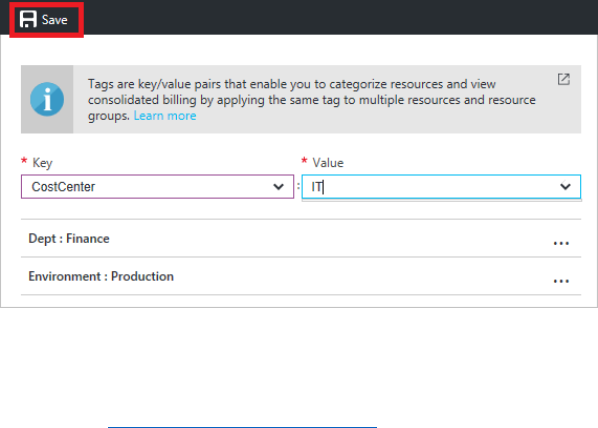

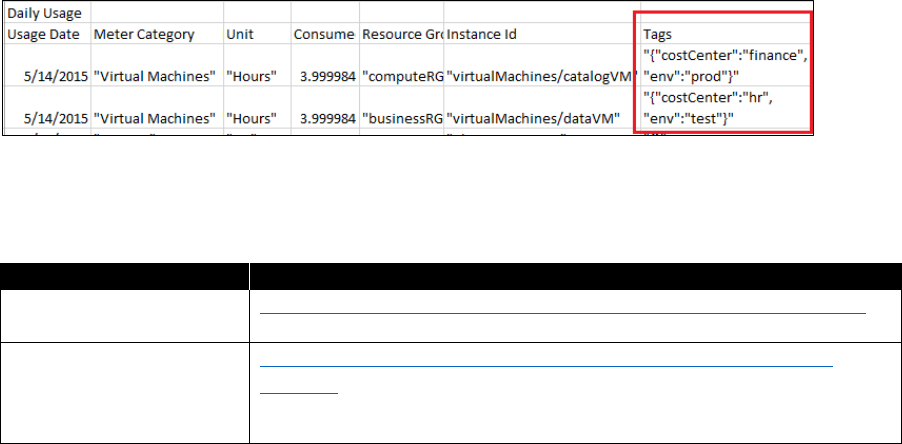

organizational processes. Technically, Azure supports this kind of cross-charging by optionally

“tagging” Azure resources. These tags are visible on the monthly statement that is issued to the

customer. The statement is also available as an Excel file.

Billing

Azure employs a subscription-based billing model. All subscriptions are bundled into the statement

that comes with the enterprise enrollment. Depending on the technical implementation of solutions,

enterprises can have only a few or hundreds of subscriptions. Either way, you should have in place

a method of billing the costs associated with the Azure resources used to be able to maintain

transparency and control in the billing process. Very often a change of the cost center model is

considered to reflect the new cost quality.

In addition to the aforementioned resource tagging option, most customers rename the Azure

subscription to include pertinent information such as the cost center. Others add the department

name or the name of the project owner. Each of these solutions work if no changes occur, such as

assigning new cost centers or assigning a new project owner. If that happens, you must change the

respective subscription names.

Whether your company can use this kind of model also depends on the way you are using Azure and

how many Azure subscriptions you can handle. There is no one-size-fits-all approach to the question

of how many subscriptions a company should have. Some companies choose to have as few as

possible while watching the limits of a single subscription.

Other companies have decided to go for a more granular approach and assign at least one

subscription to each project they start. Both solutions as well as the myriad variations have their limits.

In the limited-subscription model, you might reach the upper limit of a resource type and need to

expand to more subscriptions. However, purchasing many subscriptions becomes a complex billing

problem and can be the issue of connecting resources that reside within the subscriptions. Depending

on the customer’s products, you must define technical and security models.

Security standards and policies

When transitioning to the cloud, security plays a crucial role for all enterprises, and Microsoft is

constantly working on the products to adapt to the latest developments and support our customers’

security requirements where possible.

A good starting point for security and Azure is the Trust Center. Here, customers can take advantage

of a collection of resources that are specific to the topic. Customers in highly regulated industries like

healthcare, or government entities, need to verify that the services of Azure comply with applicable

security controls.

The Cloud Security Alliance (CSA) has prepared the Cloud Control Matrix. You can use this matrix to

assess the security risk of any cloud provider. As are many other reputable cloud providers, Microsoft

is a member of the CSA.

The special landing page for Azure security provides you with further details about the available

security measures that you can use to protect your company. This page also offers further guidance

with respect to all Azure-related security topics.

When it comes to Azure governance and security, several options exist, and you must always perform

a balancing act between customer usability and security. Beyond the high-level options mentioned

earlier, per-subscription customers can avail themselves of the built-in Security Center or define their

7 CHAPTER 1 | Microsoft Azure governance

own compliancy rules on a more granular level by using Azure policies. The duty for the governance

now is to define the framework, which, from then on, cloud solution architects can use to protect the

solutions that reside in Azure. For the latest recommendations on securing your Azure environment,

visit the Azure Trust Center.

Rights and role model

Most companies established access rights and user roles for their IT services to support the business

and fulfill regulatory requirements as well as to reflect organizational responsibilities and duties. An

outsourcing contract is also very often a driver for rights and roles models.

To be able to maintain a clear pattern of duty and responsibility and have that reflected in the rights

and role implementation, an adoption of the cloud permission models is highly advised.

With Azure, you can adopt a Role-Based Access Control (RBAC) model. In addition to the long list of

built-in roles, you can create your own custom roles.

The best practice is to make use of the built-in roles as much as possible; only if none of these roles

apply should you create a custom role. Sometimes roles and duties are mixed. These are technical

roles that you can assign to users or groups. Assigning multiple roles to a given user makes it possible

for that user to carry out his duties. We know of cases in which customers invested a lot of effort in

creating a custom role like User/Group/Computer-Operator, but it would have been easier to assign

several existing roles to the users for the same purpose.

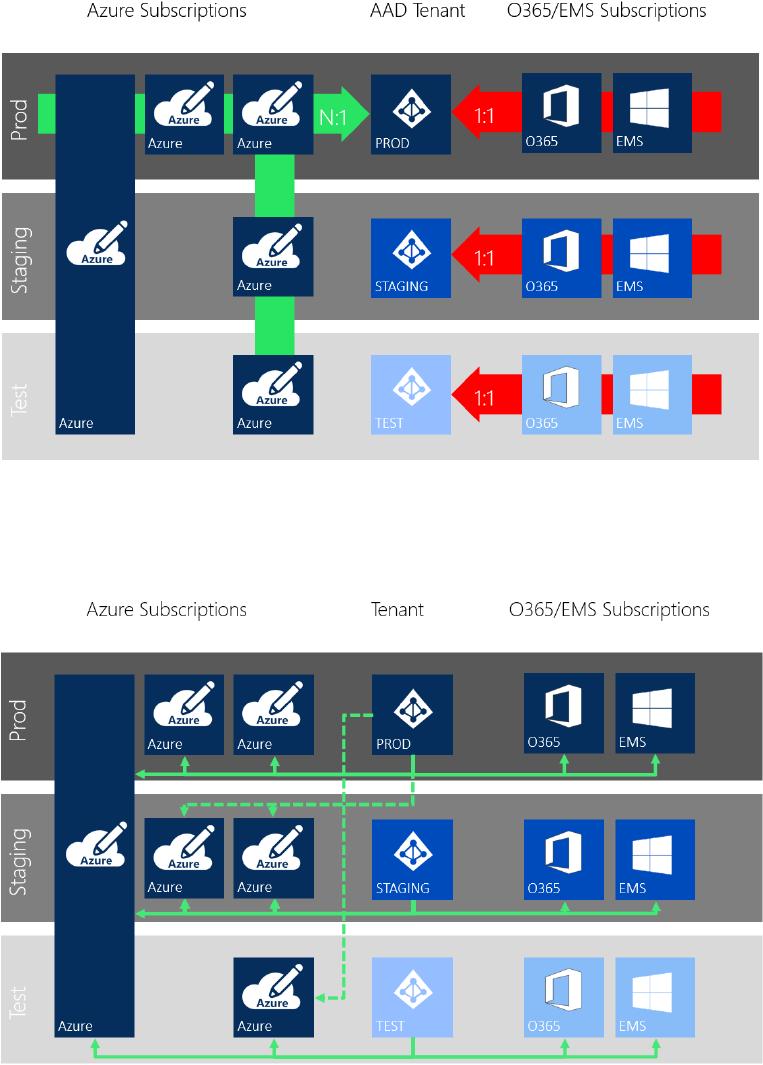

Tenant and subscription management

As part of the rights and role model, you must incorporate a new component: the Azure tenant and

subscription model. It should reflect your design considerations for Azure tenancy. To help you

understand better, the following is Microsoft’s description of a tenant:

“In the cloud-enabled workplace, a tenant can be defined as a client or organization

that owns and manages a specific instance of that cloud service. With the identity

platform provided by Microsoft Azure, a tenant is simply a dedicated instance of

Azure Active Directory that your organization receives and owns when it signs up for

a Microsoft cloud service such as Azure or Office 365.”

More info You can learn more by going to

https://docs.microsoft.com/azure/active-

directory/active-directory-whatis

.

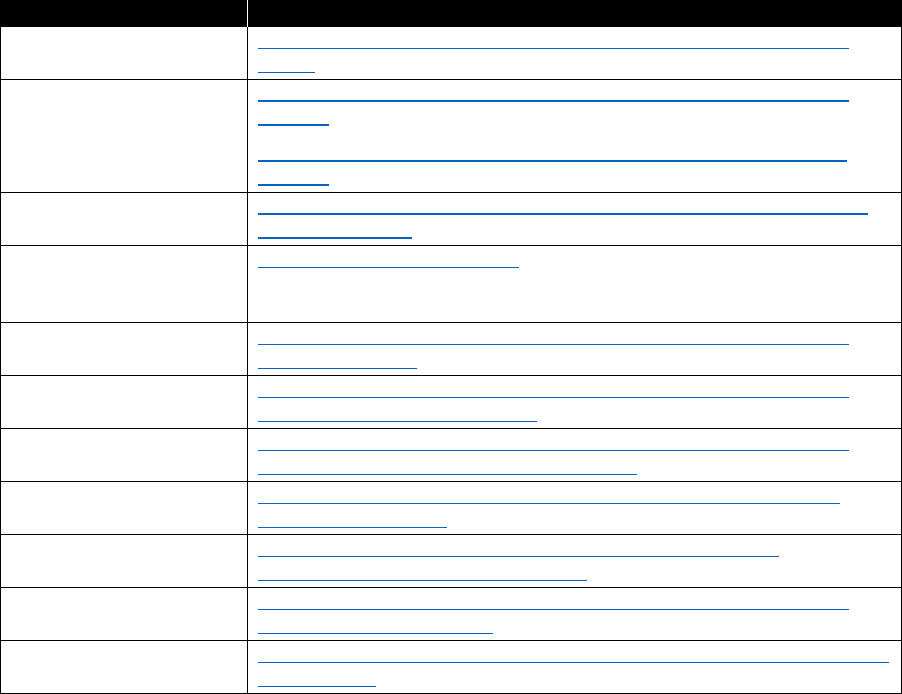

Each Azure tenant can have multiple Azure subscriptions assigned to it, and you should manage these

following a dedicated rights and role model. When it comes to deciding whether to have one or

multiple tenants, you need to consider several aspects, some technical as well as organizational:

• Authentication and directory—user management and synchronization

• Azure Service integration

• IaaS, PaaS, SaaS; for example, Citrix, WebEx, Salesforce (approximately 3,400 applications)

• Service workloads (Microsoft Exchange, SharePoint, Skype for Business, etc.)

• Administration (of the tenants)

• Support and Helpdesk (also across tenants)

• Licensing and billing

8 CHAPTER 1 | Microsoft Azure governance

As the number of tenants increase, the complexity of the solution grows significantly. We recommend

that you limit the number to the absolute minimum. Allow new tenants only if your organization is

prepared and they have a dedicated purpose that matches the effort. Some of the most common

reasons we see for customers to consider multiple tenants are the following:

• The customer is a conglomerate of different companies, and each one needs to be a separated

legal owner for Office 365 services.

• Different companies or business units belonging to the same parent company are autonomous

from an IT perspective and make different choices, at different times.

• Different companies or business units belonging to the same parent company require completely

segregated administration privileges.

• Global user distribution: concerns about performance in accessing Office 365 services out of one

single region.

• Datacenter location, which can be driven by regulatory requirements.

More info To learn more about subscription and account management, you can go to

https://docs.microsoft.com/azure/virtual-machines/windows/infrastructure-subscription-accounts-

guidelines.

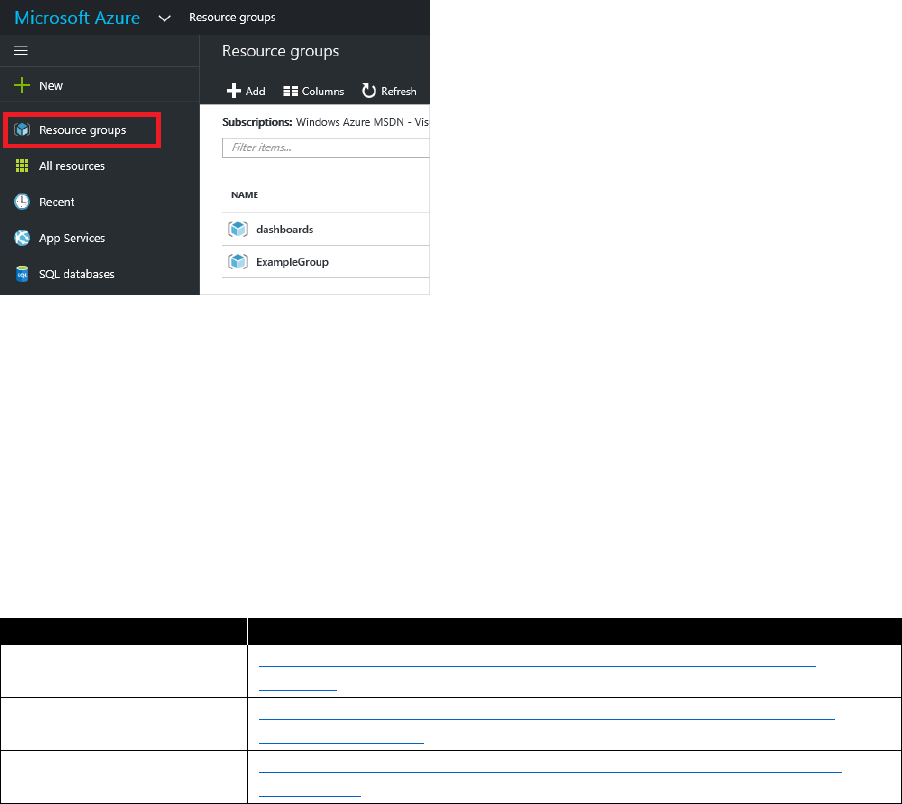

Azure administration

Typically, Azure administration is understood to mean using the Azure administrative portal to carry

out the tasks related to the solution you are developing. However, when it comes to Azure

governance, administration might require a more holistic approach. Depending on your duties and

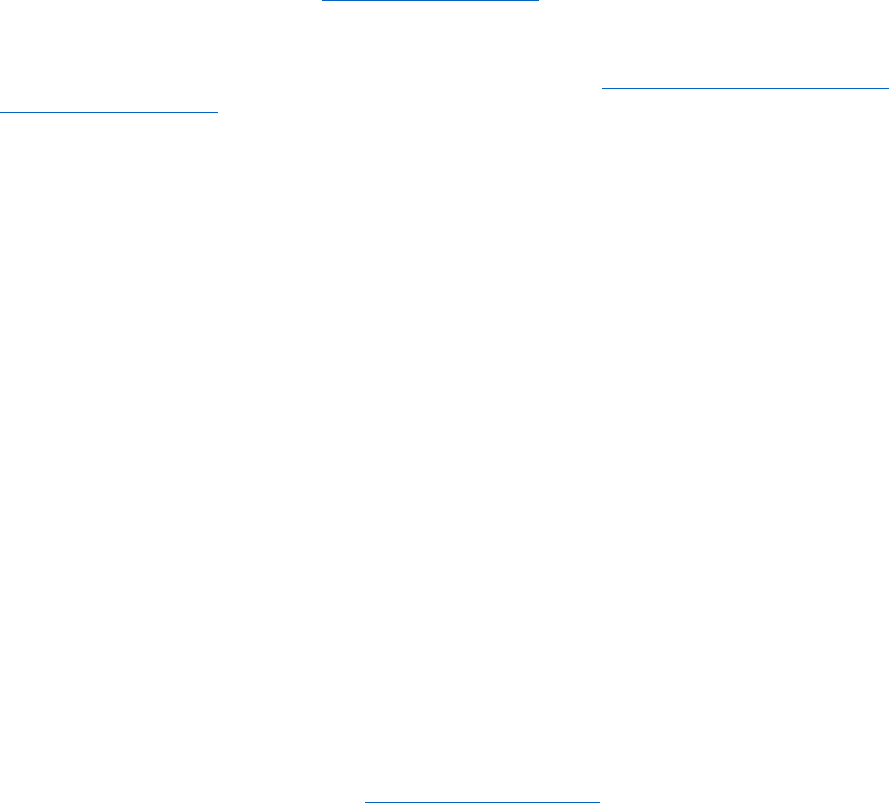

tasks, you might need to visit one of the Azure portals listed in Table 1-2.

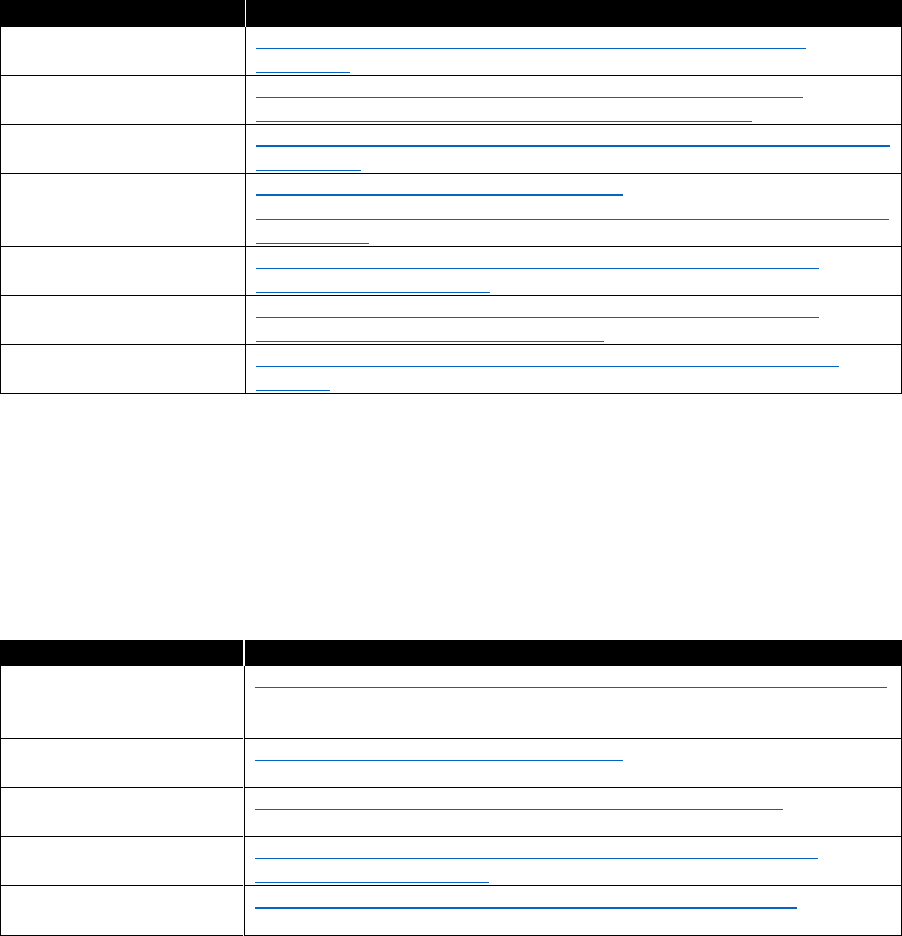

Table 1-2: Azure administration

Portal

URL

Purpose

Azure Enterprise

portal

https://ea.azure.com

Managing the EA and creating

department/account levels

Azure portal

https://portal.azure.com

The standard portal to work with Azure

Azure Account

portal

https://account.windowsazure.com

Managing the subscriptions that are

assigned to a specific account level.

Office 365 portal

https://portal.office.com

Managing the Office 365 productivity suite

with a direct link to manage Azure Active

Directory

Which portal you should use will depend on your business needs.

License management

Usually, each Azure service is licensed as a pay-as-you-go model. The same is true for follow-on

support of the service. Some services come with support included in the monthly fee; for others, you

might need to pay an extra fee. Be sure to read the summary of every installation to get the latest

information about the licensing and support model it uses. We recommend contacting your Microsoft

sales representative, who might be able to save you license fees through a negotiated EA or through

the Azure Hybrid Benefit.

In Microsoft terminology, license management usually was counting the servers or workstations

as well as the installed products. This approach was mostly quantitative and did not focus on the

individual user. Still, when it comes to Azure, licenses are needed, but they mostly come with

9 CHAPTER 1 | Microsoft Azure governance

subscriptions like Azure subscriptions or Office 365 subscriptions. Again, these are quantitative.

When it comes to server products and operating systems, it’s a different story. Some of them are

automatically licensed when installed via the Azure portal. Still some need additional licenses,

sometimes even from third-party providers. So, if you use third-party products, you will receive

a separate bill from that software provider.

License assignment

Having acquired the licenses on the operating system or the server products can result in the need

to allocate the license to the product. You can carry out allocation manually by entering license keys

into products or by using tools from the vendor to ensure the proper usage of licenses—consult the

relevant product documentation for virtual machine licensing.

A very different method applies to the subscription-based model of, for instance, Office 365 or Azure

AD. You can assign acquired licenses to your tenant and you can see them in the portal. Still, a per-

user assignment of licenses is required. Here are some examples for licensing yourself and your users

in Azure Active Directory.

Your organization as well as your technical implementation must pay attention to the life cycle of a

user and make sure the right licenses are allocated to the right user object. Very often this process is

added to the Identity and Access Management (IAM) processes that are already in place.

Naming conventions

Very often, a significant amount of effort is put into creating naming conventions. If such conventions

exist in your company an adoption of the resources within Azure is required.

Here are the main areas of definition:

• Subscriptions

• Resource groups

• Resources

• Storage accounts

• VNets

• Applications

• REST endpoints

Some of the conventions can become very granular, and a few resources like storage accounts come

with their own restrictions because of internet regulations (RFCs).

For resources that are required to be unique across a region or even the entire Azure platform, a

definition that is too strict can be too tight. You can use a naming element that serves as a break-free

component—for example, a counter or a random string at the tail—to circumvent the constraint. For

further details, refer to this discussion on Azure naming conventions.

Keep in mind that sometimes you might use the command-line interface (CLI) or PowerShell to handle

Azure resources. But also, you might sometimes use the Azure portal. Your naming convention should

take into account that requirement and insert a distinct qualifier at the beginning of a name instead of

putting it at the end where it might be truncated in dialogs.

10 CHAPTER 1 | Microsoft Azure governance

Organizational cloud readiness

Next to the functional changes that your company might need to drive its digital transformation, there

is another scope that requires attention: the organization. Although the first part can be very often

described and written down and affects people indirectly, the organizational readiness is directly

aimed at empowering your employees.

New technologies, new ways of interacting, or new ways of delivering service inside the company or

to our customers are huge changes, especially for established organizations. This kind of disruptive

approach, which very often is used to be more successful or copy competitors, sometimes puts an

enormous strain on the workforce. Examples are AirBnB and the hotel industry, and Uber and the taxi

industry. To avoid AirBnB taking away more and more customers, hotel companies needed to react.

The same was true for cab operators.

Now, for organizations to deliver something similar to Uber or AirBnB and to make sure they also find

a new home in the changed world, some companies even use change agents during the process.

The following sections describe approaches that have proven to be valuable for enterprises having the

organizational scope in mind.

Cloud competence center

Many customers have found it helpful to establish a “Center of Knowledge” for Azure within their

organization. The intention behind that decision is to create a pool of knowledge from which others in

the organization can learn. This group can help define blueprints to ensure that all of the needed

requirements such as security, operations, models, and so on are considered while the new, cloud-

based solution is designed and implemented.

Definition of needed skills and training requirements

There are a lot of training programs available to help companies successfully make the transition to

Azure and to build up a highly skilled team. Which program you choose very much depends on your

business. Financial considerations are another aspect that you should consider.

There are many online courses as well as instructor-led courses available. Many of them are free of

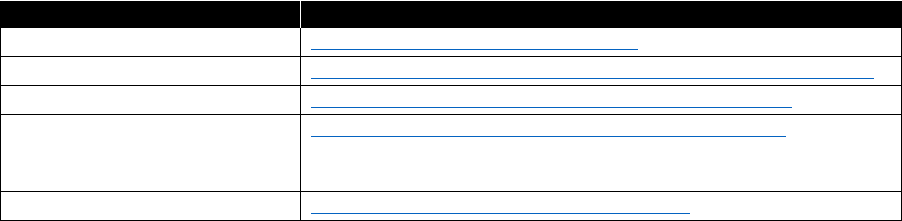

charge. Some are delivered through partners and certified trainers. Table 1-3 provides information

about some of these courses.

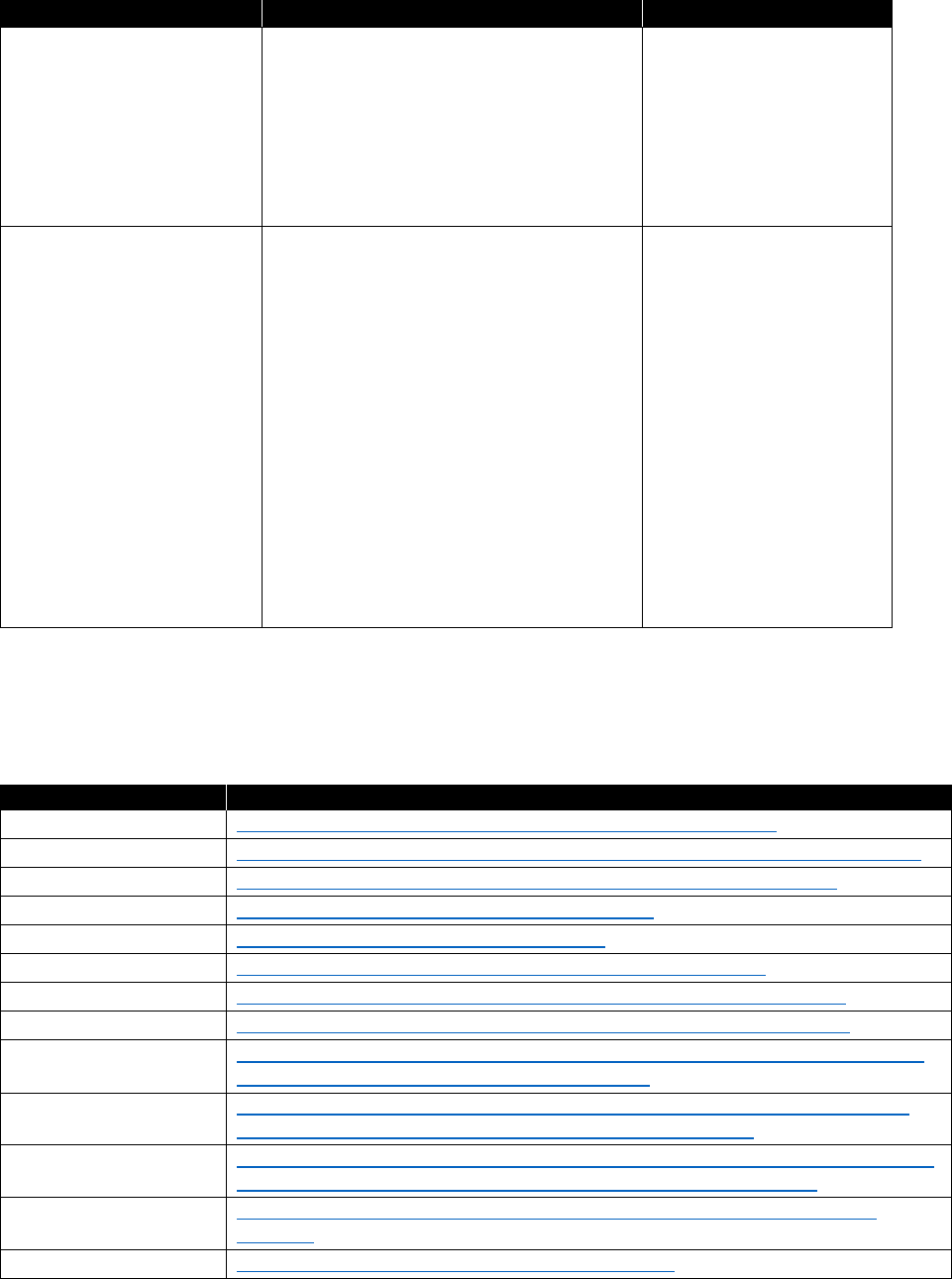

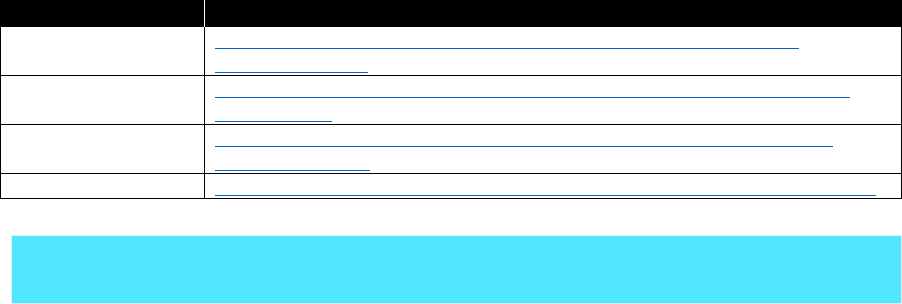

Table 1-3: Azure training and certification

Topic

Resource

General skill training

https://azure.microsoft.com/training/

Microsoft Virtual Academy

https://mva.microsoft.com/search/SearchResults.aspx#!q=Azure

Azure certification

https://www.microsoft.com/learning/azure-exams.aspx

Design guidance, reference

architectures, design patterns,

and best practices

https://docs.microsoft.com/azure/#pivot=architecture

Azure Essentials

https://www.microsoft.com/azureessentials

Cloud readiness scopes

Following are typical areas of technology and knowledge:

• Identity. One of the cornerstones of the entire picture of Azure is the identity of a person.

Microsoft sees the identity as the control plane of the modern world. You need to review your

existing identity structure, which might be based on an on-premises implementation of Active

11 CHAPTER 1 | Microsoft Azure governance

Directory, to determine whether it can serve the new purposes described earlier in this chapter,

such as rights and role models or license assignment.

Many customers use this opportunity to review their current IAM system and modernize it to

prepare it for new tasks. A profound knowledge about identities, the relation to Azure AD, and its

security options should reside in the aforementioned competence center. Typical areas of interest

include the following use cases:

• Identity integration. What has the company already in place to integrate identities into the

application, and is it a more centralized approach or per-application with a relative

independent model?

For the success of the future identity model, the company must decide which way to go for its

identity integration. After the decision has been made, you can build a solution based on that

decision. Plan for iterations in the design process to find the best solution and review against

your security requirements.

• Synchronization with on-premises Identity Management Systems. What is the life cycle

for identity management within the organization (in terms of user permissions and roles), and

how can this process be securely extended for cloud identities?

Depending on the identity integration decision, you can develop a solution to ensure the

required life cycle of user objects, group objects, and so on.

• Authentication scenarios. Will all solutions—whether on-premises or in the cloud—work

with an identity that has been given to the user?

After you have designed the identity integration and identity the life cycle, we recommend a

use case–based approach to ensure that all applications, on-premises and cloud based, can

consume the identities the users will be assigned:

• Multiforest considerations. Does your company maintain a multiforest implementation

on-premises due to historical or even regulatory reasons?

Some historical scenarios and some corporate decisions might not fit ideally in the cloud

identity scenario. One example that we see very often is the corporate email address like

contoso.com is a must, but all lines of business keep the requirement for their own mail

servers, as well. You can do this in Azure with a combination of Office 365 and Azure AD, but

this might not be the most effective solution. Also, if user objects for one individual are kept

in several forests simultaneously, an easy way into the cloud is not possible. You need to

consider some of the constraints of legacy implementations of Active Directory when it comes

to connecting it with Azure AD.

• Connectivity. Another core component of all successful cloud adoptions is a very good

knowledge about the network that is currently in place, how your applications are using the

network, and how Azure is handling it.

There are multiple ways to securely connect your on-premises network to your private segment in

Azure or give your next business application a secure home in any of your subscriptions, even

without direct connectivity to your backbone. You can find a comprehensive list of options in

Chapter 2.

As with identities, your competence center should be knowledgeable in all aspects of networking

and how to connect to Azure.

12 CHAPTER 1 | Microsoft Azure governance

• Development. Although the first two areas of knowledge are needed with every customer, the

topic of development might not be needed for all. If you consider yourself as a company that

needs cloud development to also be under governance control, the modern methods of cloud

application development and terms like DevOps, or technologies like containers should be a

priority. Chapter 4 presents more information for application development and operations

recommendations.

Development of methodology for cloud integration

One of the major purposes of a cloud competence center in many enterprises is to define standards

and develop methodologies for adoption of Azure services. This ensures a higher level of quality and

makes sure that a good reuse of knowledge is achieved as well as a permanent alignment with the

latest business or security requirements.

Development of cloud-integration blueprints: enterprise level

Some business solutions are designed for internal use only and have the individual contributor in the

company as target for the implementation.

Based on the solution’s requirements, the competence team should develop technology blueprints

that ensure that the overall architecture of Azure with its tenant decisions, network recommendations,

operational requirements, and so on are used and a proper SLA can be put on the final

implementation.

Development of cloud-integration blueprints: partners, suppliers, and customers

Some other solutions are designed to cooperate with partners or are focused directly at the customer.

Depending on the business of your enterprise, you might need additional blueprints to serve as a

starting point for solutions that are accommodating that purpose.

You should pay special attention to the topic of identity, network, and security. You should expect

these areas to become very complex depending on the scale of the solution or the integration needs

of the solution.

13 CHAPTER 2 | Architecture

C H A PT E R

2

Architecture

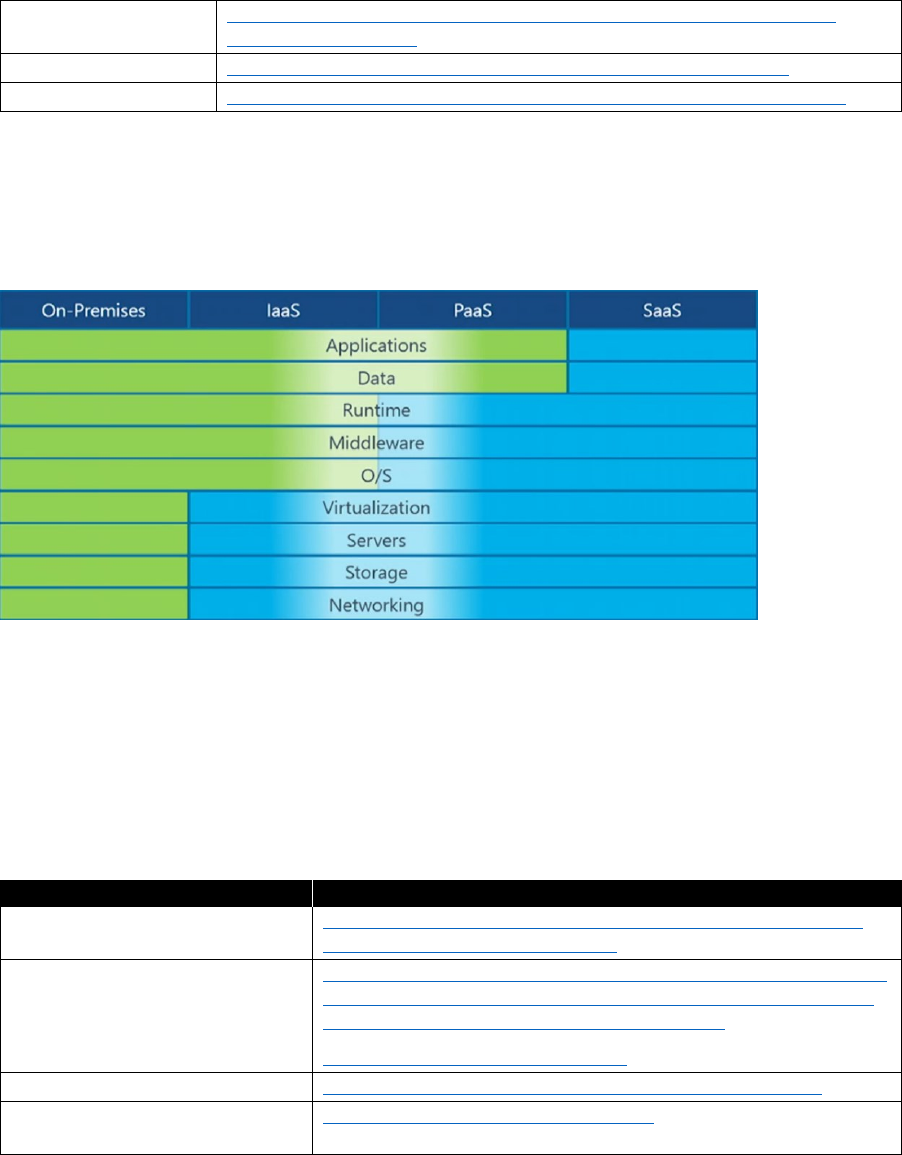

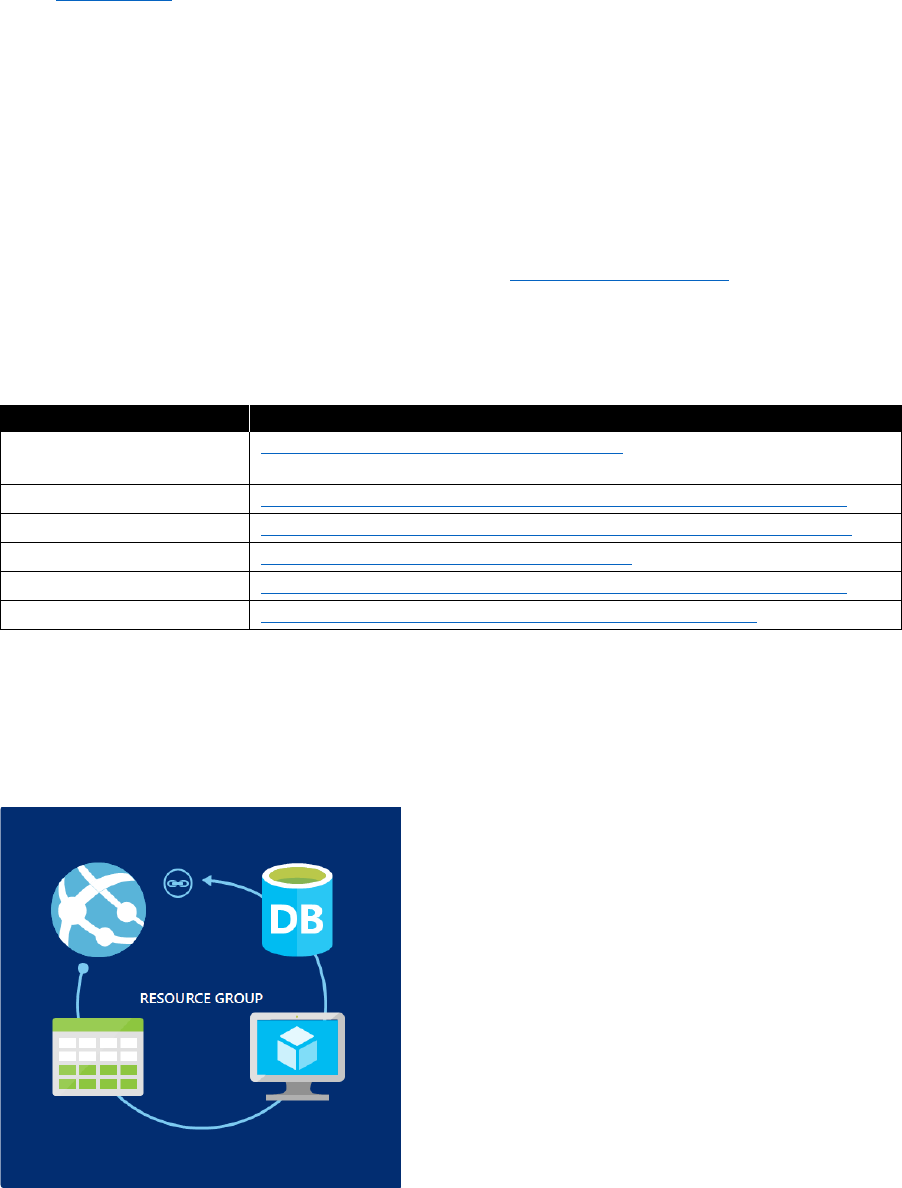

When an enterprise adopts Microsoft Azure as its cloud platform, it

uses many different services that are owned and managed by multiple

individuals. To allow for governance of its resources, Azure provides some

general features, as illustrated in Figure 2-1.

Figure 2-1: The building blocks of Azure governance

You should use Naming Standards to better identify resources in the portal, on a bill, and within

scripts; for example:

• Follow the naming convention guidance (see the resources table in this section)

• Use camelCasing

• Consider using Azure Policies to enforce naming standards

You can use Azure Policies to establish conventions for resources in your organization. By defining

conventions, you can control costs and more easily manage your resources. For example, you can

specify that only certain types of virtual machines (VMs) are allowed, or you can require that all

resources have a particular tag. Policies are inherited by all child resources. So, if a policy is applied

to a resource group, it is applicable to all of the resources in that group. You can use Azure Policies at

the subscription level to enforce the following:

• Geo-compliance/data sovereignty

14 CHAPTER 2 | Architecture

• Cost management

• Default governance through required tags

• Prohibit public IP addresses where applicable

The Azure Activity Log is a log that provides insight into the write operations that were performed on

resources in your subscription (previously known as “Audit Logs” or “Operational Logs”). Using the

Activity Log, you can determine the “what, who, and when” for any write operations (PUT, POST,

and DELETE) taken on the resources in your subscription. You can also understand the status of the

operation and other relevant properties. You can integrate the Activity log into existing auditing

solutions.

You apply tags to your Azure resources to logically organize them by categories. Each tag consists of

a key and a value. Use resource tags to enrich your resources with metadata such as the following:

• Bill to

• Department (or business unit)

• Environment (production, stage, development)

• Tier (web tier, application tier)

• Application owner

• Project name

• Composite app (any resources tagged with the same value are considered part of the same

application service)

• VM workload (identifies the primary application workload running in the VM—i.e., SQL Server,

Jenkins)

• VM role (identifies the role the VM is delivering in the larger service—for example, database or

build server)

A resource group is a container that holds related resources for an Azure solution. The resource group

can include all of the resources for the solution or only those resources that you want to manage as a

group. You decide how you want to allocate resources to resource groups based on what makes the

most sense for your organization. Following are some considerations regarding resource groups:

• All of the resources in your group should share the same life cycle. You deploy, update, and delete

them together. If one resource, such as a database server, needs to exist on a different

deployment cycle, it should be in another resource group.

• Each resource can exist in only one resource group.

• You can add or remove a resource to a resource group at any time.

• You can move a resource from one resource group to another group. For more information, see

Move resources to new resource group or subscription.

• A resource group can contain resources that reside in different regions.

• You can use a resource group to scope access control for administrative actions.

• A resource can interact with resources in other resource groups. This interaction is common when

the two resources are related but do not share the same life cycle (for example, web apps

connecting to a database).

15 CHAPTER 2 | Architecture

Azure Role-Based Access Control (RBAC) gives you fine-grained access management for Azure. Using

RBAC, you can grant only the amount of access that users need to perform their jobs. You should use

predefined RBAC roles where feasible, and only define custom roles where needed. You should follow

the principle of granting the least required privilege.

As an administrator, you might need to lock a subscription, resource group, or resource to prevent

other users in your organization from accidentally deleting or modifying critical resources. You can set

the lock level to CanNotDelete or ReadOnly. When you consider using Azure resource locks to protect

resources from unintended deletion, assign Owner and User Access Administrator roles only to people

who you want to allow removing locks.

Azure Automation provides a way for users to automate the manual, long-running, error-prone, and

frequently repeated tasks that are commonly performed in a cloud and enterprise environment. You

can use Azure Automation to define runbooks that can handle common tasks such as shutting down

unused resources and creating resources in response to triggers. With Azure Automation, you can do

the following:

• Create Azure Automation accounts and review community-provided runbooks in the gallery

• Import and customize runbooks for your own use

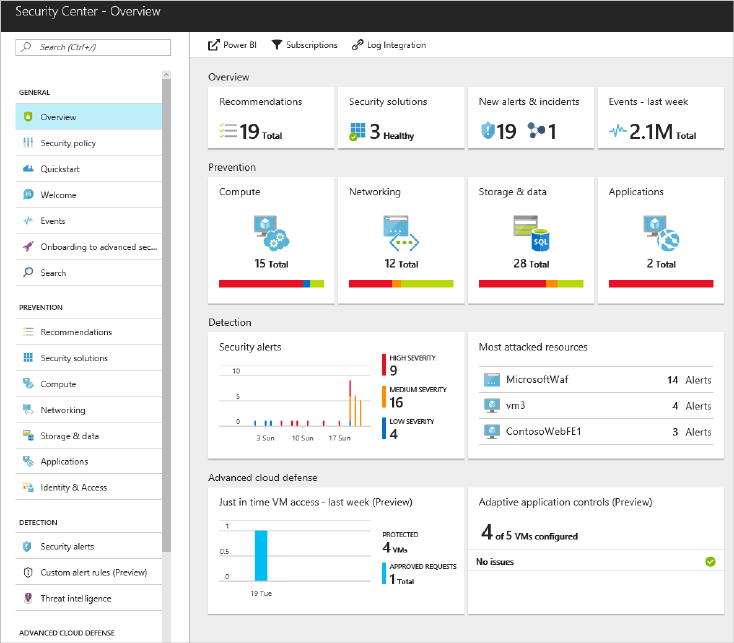

Azure Security Center helps you to prevent, detect, and respond to threats with increased visibility

into and control over the security of your Azure resources. It provides integrated security monitoring

and policy management across your Azure subscriptions, helps detect threats that might otherwise go

unnoticed, and works with a broad ecosystem of security solutions. You can use the Security Center to

keep control of the security status of resources in your subscriptions:

• Activate data collection of resources you want to be monitored by Security Center

• Consider activating advanced threat management to detect and respond to threats in your

environment

In the next subsections, we do some deep dives into selected topics that you should consider when

starting out with Azure as your public cloud platform. You also should refer to Azure Onboarding

Guide for IT Organizations, which can help customers that are new to Azure to get underway. It

explains the basic concepts of Azure, whereas the guide you’re reading now provides a blueprint and

best practices to roll out Azure on a large scale for customers with solid knowledge and experience

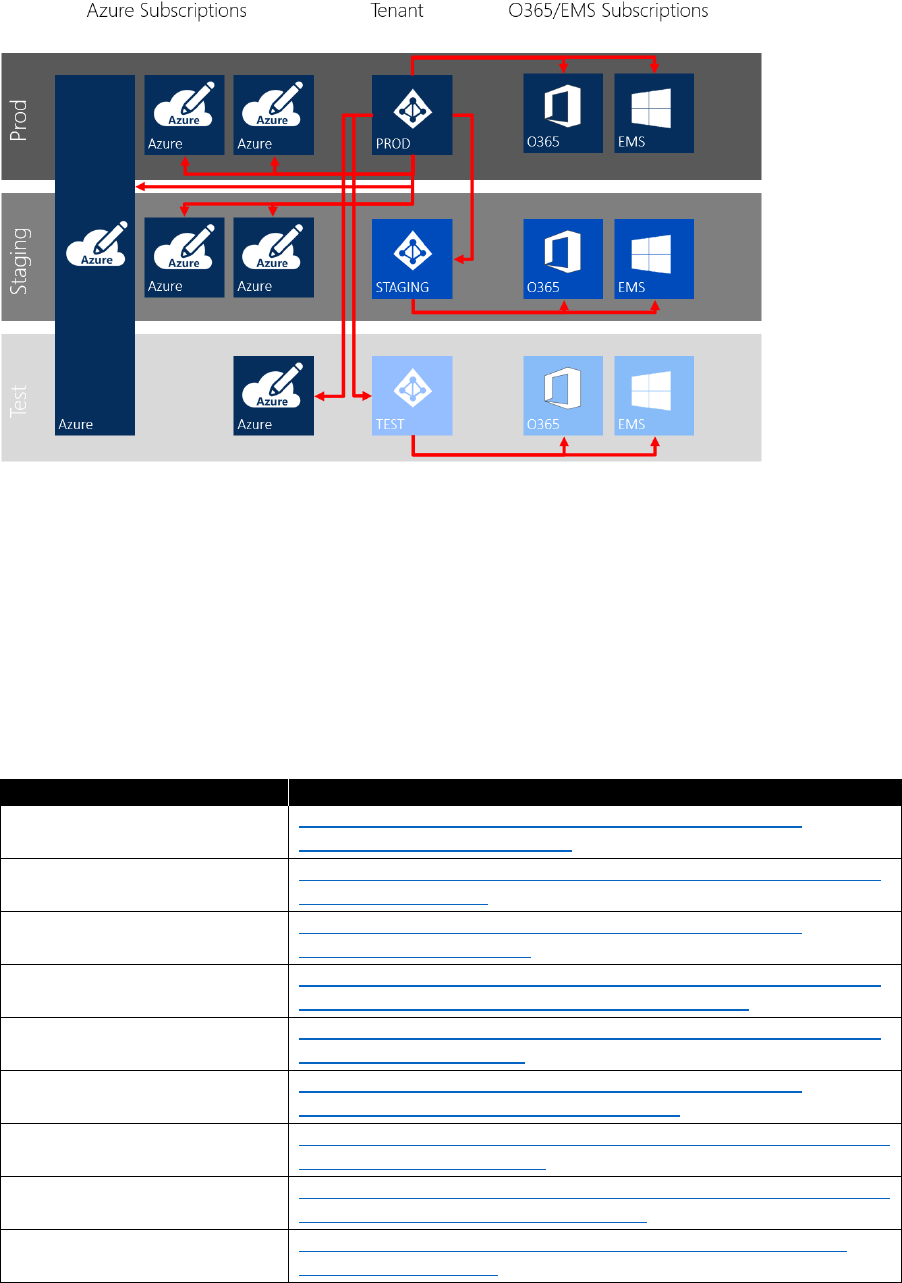

with Azure. Table 2-1 provides resources on Azure architecture.

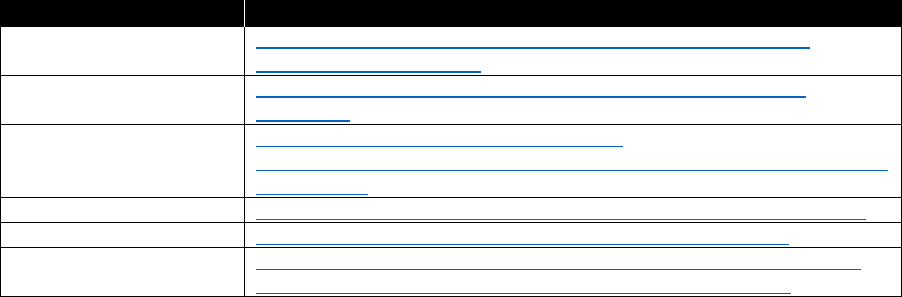

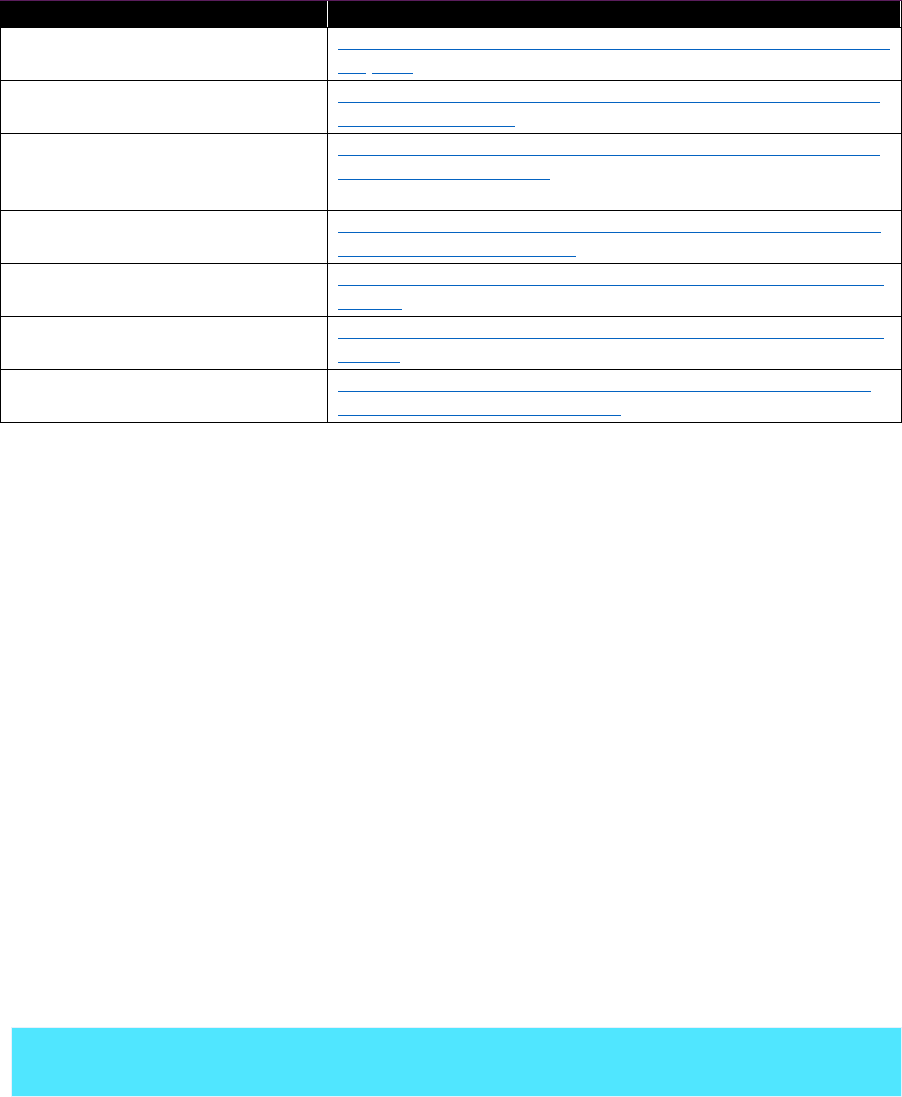

Table 2-1: Architecture resources

Topic

Resource

Azure Enterprise

scaffold

https://docs.microsoft.com/azure/azure-resource-manager/resource-

manager-subscription-governance

Naming conventions

https://docs.microsoft.com/azure/guidance/guidance-naming-conventions

Azure policies

https://docs.microsoft.com/azure/azure-resource-manager/resource-

manager-policy

Activity Log

https://docs.microsoft.com/azure/monitoring-and-diagnostics/monitoring-

overview-activity-logs

Resource tags

https://docs.microsoft.com/azure/azure-resource-manager/resource-

group-using-tags

Resource groups

https://docs.microsoft.com/azure/azure-resource-manager/resource-

group-overview

RBAC

https://docs.microsoft.com/azure/active-directory/role-based-access-

control-what-is

16 CHAPTER 2 | Architecture

Azure locks

https://docs.microsoft.com/azure/azure-resource-manager/resource-

group-lock-resources

Azure Automation

https://docs.microsoft.com/azure/automation/automation-intro

Security Center

https://docs.microsoft.com/azure/security-center/security-center-intro

Security

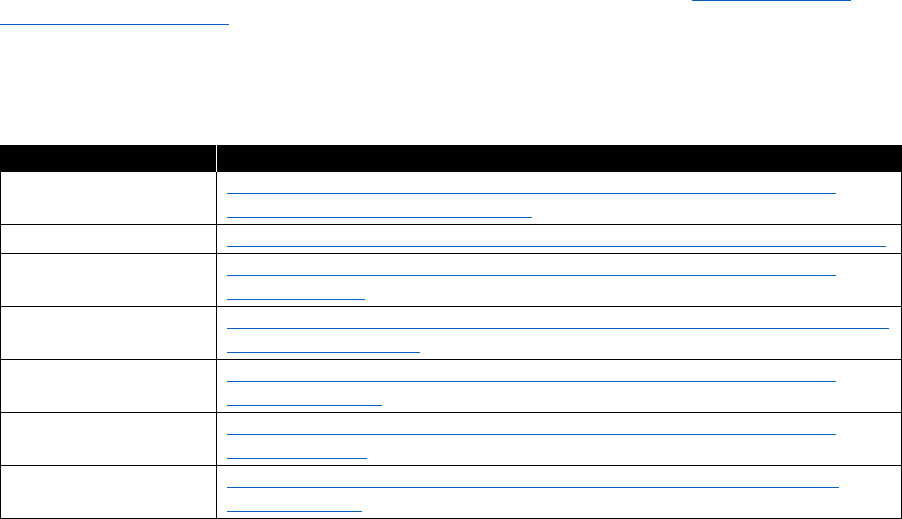

The first thing to understand about cloud security is that there are different scopes of responsibility,

depending on what kind of services you are using. In Figure 2-2, green indicates areas of customer

responsibility, whereas blue areas are the responsibility of Microsoft.

Figure 2-2: Scope of security responsibility

For example, if you are using VMs in Azure (infrastructure as a service, or IaaS), Microsoft is responsible

for securing the physical network and storage as well as the virtualization platform, which includes

patching of the virtualization hosts. But you will need to take care of securing your virtual network

and public endpoints yourself as well as patching the guest operating system (OS) of your VMs.

This document focuses on customer responsibilities. Microsoft responsibilities are not within the

scope of this document. Table 2-2 lists where you can find a whitepaper covering that topic.

Table 2-2: Security resources

Topic

Resource

Security responsibility

https://azure.microsoft.com/resources/videos/azure-security-

101-whose-responsibility-is-that/

Microsoft measures to protect

data in Azure

https://azure.microsoft.com/mediahandler/files/resourcefiles/d8

e7430c-8f62-4bbb-9ca2-f2bc877b48bd/Azure%20Onboarding

%20Guide%20for%20IT%20Organizations.pdf

https://servicetrust.microsoft.com/

Azure Security

https://docs.microsoft.com/azure/security/azure-security

Microsoft Whitepaper Security

Incident Response

http://aka.ms/SecurityResponsepaper

17 CHAPTER 2 | Architecture

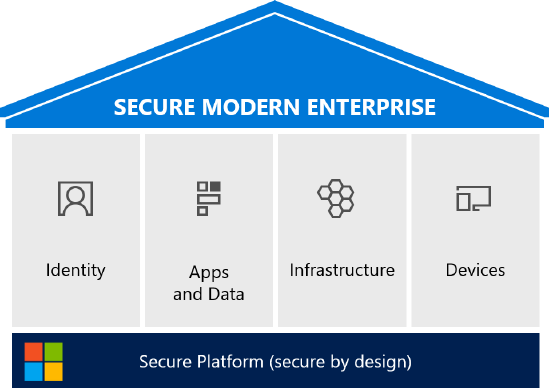

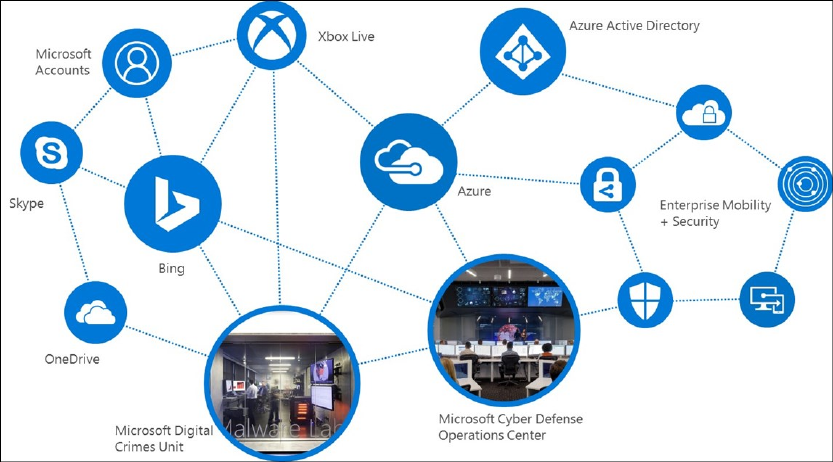

Securing the modern enterprise

Securing a modern enterprise is complex and challenging. Microsoft’s cybersecurity portfolio can help

you to do the following:

• Increase visibility, control, and responsiveness to threats

• Reduce security integration and vendor management costs

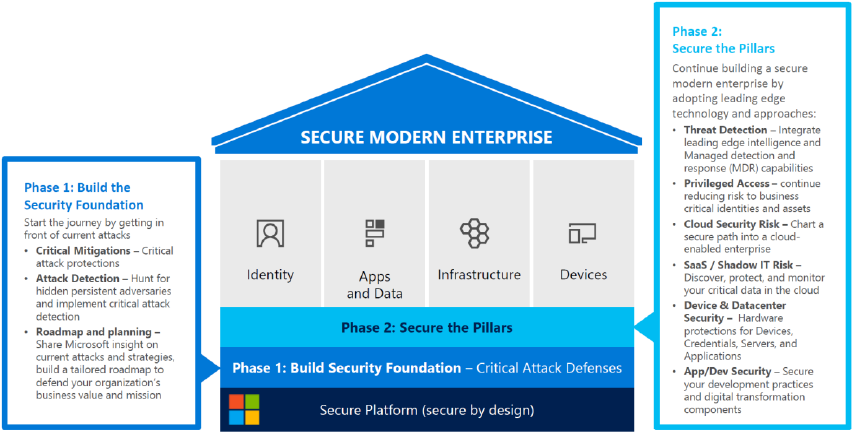

Following are the pillars of a secure modern enterprise (see Figure 2-3):

• Identity. Embraces identity as primary security perimeter and protects identity systems, admins,

and credentials as top priorities

• Apps and Data. Aligns security investments with business priorities including identifying and

securing communications, data, and applications

• Infrastructure. Operates on modern platform and uses cloud intelligence to detect and

remediate both vulnerabilities and attacks

• Devices. Accesses assets from trusted devices with hardware security assurances, great user

experience, and advanced threat detection

Figure 2-3: Components of a secure modern enterprise

Phase 1: Build the security foundation

The Securing Privileged Access (SPA) roadmap helps you to implement your security foundation (see

Figure 2-4). This roadmap is structured in three stages. In stage 1, you mitigate the most frequently

used attack techniques of credential theft by doing the following:

• Separating administrator accounts for administrator tasks

• Implementing Privileged Access Workstations (PAW) pattern for Active Directory administrators

• Using unique local administrator passwords for workstations

• Using unique local administrator passwords for servers

18 CHAPTER 2 | Architecture

Figure 2-4: Build the security foundation phases

You can implement stage 2 of the roadmap in one to three months and build on the mitigations of

stage 1:

• Follow the PAW pattern for all administrators and implement additional security hardening

• Time-bound privileged (Just in Time [JIT]) access for administrative tasks by using Azure Privileged

Identity Management for Azure Active Directory (Azure AD), Privileged Access Management

(PAM) for Active Directory,

or JIT virtual machine access in Azure Security Center

• Enforce multifactor authentication (MFA) for privileged access

• Use the Just Enough Admin (JEA) pattern for domain controller maintenance

• Implement attack detection to gain visibility into credential theft and identity attacks by using

Microsoft Advanced Threat Analytics (ATA)

, Azure Security Center advanced detection, and SQL

Database threat detection

Stage 3 of the SPA roadmap strengthens and adds mitigations across the spectrum, allowing for a

more proactive posture:

• Modernize roles and delegation model founded on JIT and JEA

• Smartcard or passport authentication for all administrators

• Administration forest for Active Directory administrators (ESAE)

• Code integrity policy for datacenters allows only authorized executables to run on a machine

• Shielded VMs for virtual datacenters prevent unauthorized inspection or theft by storage and

network admins

Phase 2: Secure the pillars

The next step after covering the basics of your security foundation is to adopt additional, leading-

edge security technologies, including threat detection and better intelligence:

• Build the strongest protections for identity systems and administrators. Protect identities with

threat intelligence and hardware assurances.

19 CHAPTER 2 | Architecture

• Increase visibility and protection for apps and data in the cloud and on-premises. Reduce the risk

to the applications you develop, Software as a service (SaaS) apps, and legacy/on-premises apps.

• Integrate cloud infrastructure securely. Protect by using hardware integrity and isolation, detect

with analysts and threat intelligence.

• Deploy hardware protections for devices, data, applications, and credentials. Use advanced attack

detection and remediation technology and analyst support.

Table 2-3 lists the needed security resources.

Table 2-3: Security resources

Topic

Resource

Cybersecurity reference

architecture

https://mva.microsoft.com/training-courses/cybersecurity-reference-

architecture-17632

SPA roadmap

http://aka.ms/SPARoadmap

ESAE

http://aka.ms/esae

PAW

https://docs.microsoft.com/windows-server/identity/securing-

privileged-access/privileged-access-workstations

Identity versus network security perimeter

It’s a universal concept: When you want to protect something, build a perimeter around it.

Traditionally, in IT this perimeter is at the network level in the form of a firewall. The purpose of a

network perimeter is to repel and detect classic attacks, but attackers can reliably defeat them via

phishing and credential theft. At the same time, your data is moving out of the organization via

approved or unapproved cloud services. Last but not least, employees need to keep productive

wherever they are, using whatever device they carry with them, meaning that your data might be

accessed by unmanaged devices. Matching these new challenges requires you to build a new kind of

perimeter in addition to your existing network perimeter: an identity security perimeter.

Why you need an identity security perimeter

Identity controls access to your data. To protect your data, protect it directly at the front door by

implementing multifactor authentication and conditional access. Conditions for access might depend

on the user’s current location (or IP address), on the user’s device state, or the user’s general risk

score. If any of these configurable conditions are met, a modern identity system either challenges the

user for a second authentication factor or denies access entirely.

What challenges must be matched

Table 2-4 lists what strategies are used to overcome specific challenges and which Microsoft solution

you can employ to implement a specific strategy.

20 CHAPTER 2 | Architecture

Table 2-4: Cloud security challenge matrix

Challenges

Strategy

Solution

Phishing reliably gains

foothold in environment

Credential theft allows

traversal within

environment

Time-of-click (versus time-of-send)

protection and attachment detonation

Integrated intelligence, reporting, policy

enforcement

Securing privileged access roadmap to

protect Active Directory and existing

infrastructure

Microsoft Office 365

Advanced Threat

Protection

Azure Active Directory

Identity Protection,

Conditional Access

Advanced Threat

Analytics

Shadow IT

SaaS management

Discover SaaS usage

Investigate current risk posture

Take control to enforce policy on SaaS

tenants and data

Cloud App Security

Limited visibility and

control of sensitive data

Data classification is large

and challenging

Protect data anywhere it goes

Bring or hold your own key

Support most popular formats

Integration with existing Data Loss

Prevention (DLP) systems

Azure Information

Protection and Azure

Rights Management

Edge DLP

Endpoint DLP

Provide secure PCs and

devices for sensitive data

Manage and protect data

on noncorporate devices

Provide a great user experience,

hardware-based security, and advanced

detection + response capabilities

Mobile Device Management (MDM) and

Mobile App Management (MAM) of

popular devices via Intune

Policy enforcement via Conditional

Access

Windows 10

Intune MDM/MAM,

Conditional Access

System Center

Configuration Manager +

Intune

Protect broad attack

surface that includes

applications, infrastructure,

management and

administrative practices

Protect Privileged Identities:

• Achieve least privilege with JEA and

JIT

• Protect credentials in use with

Credential Guard and Remote

Credential Guard

Harden Private Cloud Fabric

• Restrict what applications can run

on servers (Device Guard)

• Protect applications with exploit

mitigations (Control Flow Guard)

and antimalware (Windows

Defender)

Active Directory MIM

PAM

Implement PAW Pattern

Credential Guard

Controls Flow Guard

Windows Defender

21 CHAPTER 2 | Architecture

Challenges

Strategy

Solution

Secure workloads in the cloud and on-

premises

• Minimize attack surface (Nano) and

VM grade isolation (Hyper-V

containers)

• Shielded VMs provide isolation and

integrity for sensitive workloads

Increased complexity by

adding cloud datacenters

to existing on-premises

infrastructure

Limited IT security

knowledge and tooling to

secure cloud/hybrid

infrastructure

Broad and deep visibility—get insights

into security of your hybrid cloud

infrastructure and workloads

Provide familiar capabilities—via Security

Information and Event Management

(SIEM) integration and security

capabilities in Azure Marketplace

Start with high security—Secure

platform, rapidly find and fix basic

security issues, critical capabilities

Log Analytics Security

Center, Threat Protection,

and Threat Detection

Security Appliances from

Azure Marketplace

DDoS attack mitigation,

Backup and Site

Recovery, Network

Security Groups, Disk and

Storage Encryption, Azure

Key Vault, Azure

Antimalware, SQL

Encryption, Firewall, and

Secure DevOps Kit for

Azure, and Application

Insights

A Microsoft cybersecurity architect can provide expert advice and help you to build your security

roadmap and support the successful integration into your organization. Table 2-5 lists the available

resources.

Table 2-5: Identity security perimeter resources

Topic

Resource

Microsoft Data Guard

https://www.microsoft.com/store/p/data-guard/9nblggh0j1ps

Microsoft Control Flow

https://msdn.microsoft.com/library/windows/desktop/mt637065(v=vs.85).aspx

Shielded VMs

https://docs.microsoft.com/system-center/vmm/guarded-deploy-vm

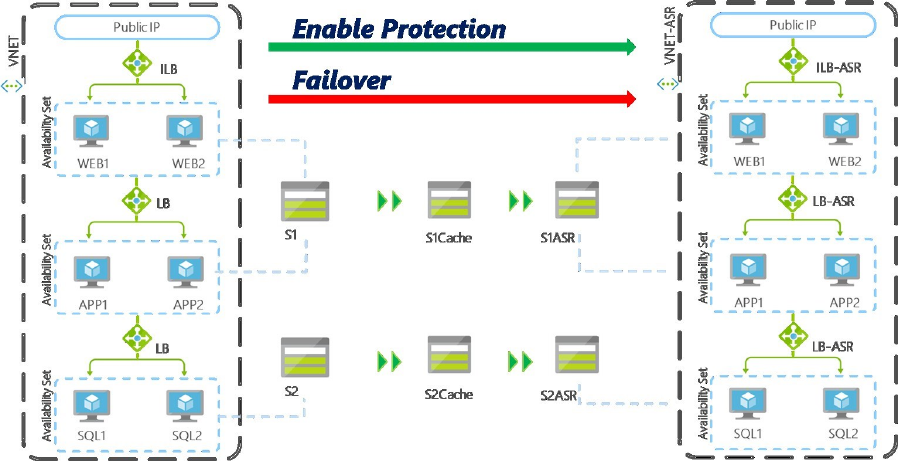

Azure Site Recovery

https://docs.microsoft.com/azure/site-recovery/

Azure Backup

https://docs.microsoft.com/azure/backup/

Key Vault

https://docs.microsoft.com/azure/key-vault/key-vault-whatis

Azure Antimalware

https://docs.microsoft.com/azure/security/azure-security-antimalware

Security Center

https://docs.microsoft.com/azure/security-center/security-center-intro

Privileged Identity

Management

https://blogs.technet.microsoft.com/tangent_thoughts/2016/10/26/azure-pim-

initial-walkthrough-and-links-aka-msazurepim/

DLP

https://blogs.msdn.microsoft.com/mvpawardprogram/2016/01/13/data-loss-

prevention-dlp-in-sharepoint-2016-and-sharepoint-online/

Office 365 Advanced

Threat Protection

https://blogs.microsoft.com/firehose/2015/04/08/introducing-exchange-online-

advanced-threat-protection/#sm.00011o28ibuuaefxph31q9rbgj49j

System Center

Configuration Manager

https://www.microsoft.com/cloud-platform/system-center-configuration-

manager

Intune MDM

https://docs.microsoft.com/intune/device-lifecycle

22 CHAPTER 2 | Architecture

Topic

Resource

Intune MAM

https://blogs.technet.microsoft.com/cbernier/2016/01/05/microsoft-intune-

mobile-application-management-mam-standalone/

Azure AD Conditional

Access

https://docs.microsoft.com/azure/active-directory/active-directory-conditional-

access

Windows 10 Security

https://docs.microsoft.com/windows/threat-protection/overview-of-threat-

mitigations-in-windows-10

Application Insights

https://docs.microsoft.com/azure/application-insights/app-insights-overview

Data protection

Data is one of the most valuable assets that companies have, and it is critical that this asset is

protected against unauthorized access or hostage takers. Access is controlled by giving authenticated

users the authorization to read, change, or delete data. Some data might be less critical than other

data; that is, information might be publicly available or strictly confidential.

Data classification

Most companies already have a data classification policy in place. You will need to understand how

using Azure as an application platform will affect this policy.

Table 2-6 presents examples of data classes that you might want to differentiate.

Table 2-6: Data classification example

Class

Example

Public

Announced corporate financial data

Low business impact

Age, gender, or ZIP code

Moderate business impact

Address, operating procedures

High business impact

Design and functional specifications

For each data protection class, your policy should define at least the following:

• To which data class a given policy applies

• The party responsible for data protection

• Precautions to protect data against any unauthorized or illegal access by internal or external

parties

• How data should be stored and backed up

• How you ensure data is accurate and up to date

• How long data will be stored

• Under what circumstances you will disclose data and to whom

• How to keep individuals informed about data you hold

• Where data will be stored or transferred to; for instance, countries having adequate data

protections laws

• What to do in case of lost, corrupted, or compromised data

23 CHAPTER 2 | Architecture

Review your data protection policy regularly; for instance, once every two years to keep it up to date

with new technologies and changes in jurisdiction.

Table 2-7 lists the different features that Azure provides for you to implement your data protection

policies.

Table 2-7: Customer-configurable protection options in Azure

Topic

Resource

Volume-level encryption

https://docs.microsoft.com/azure/security/azure-security-disk-

encryption

Key management

https://azure.microsoft.com/resources/videos/azurecon-2015-

encryption-and-key-management-with-azure-key-vault/

SSL certificates

https://docs.microsoft.com/azure/app-service-web/web-sites-purchase-

ssl-web-site

SQL Database encryption

https://docs.microsoft.com/sql/relational-

databases/security/encryption/transparent-data-encryption-with-azure-

sql-database

Azure Rights

Management services

https://docs.microsoft.com/information-protection/understand-

explore/what-is-azure-rms

Azure Information

Protection

https://docs.microsoft.com/information-protection/understand-

explore/what-is-information-protection

Azure Information

Protection samples

https://github.com/Azure-Samples/Azure-Information-Protection-

Samples

Microsoft Azure Information Protection is a very powerful solution to enforce the appropriate

protection of documents and emails according to your data protection policies.

Using Azure Information Protection, documents are classified at creation-time (see Table 2-8). The

document is encrypted from creation for the rest of its life cycle. When an authenticated user has the

appropriate permissions according to the applied policy, the document is decrypted, and the user is

able to open it—independent of the current location of the document.

Table 2-8: Data classification resources

Topic

Resource

Carnegie Mellon

University Guidelines for

Data Classification

http://www.cmu.edu/iso/governance/guidelines/data-classification.html

Microsoft Data

Classification Wizard

https://www.microsoft.com/security/data/

Microsoft Data

Classification Toolkit

http://www.microsoft.com/download/details.aspx?id=27123

Azure Rights

Management

https://docs.microsoft.com/information-protection/understand-

explore/what-is-azure-rms

Protecting data in

Microsoft Azure

http://go.microsoft.com/fwlink/?LinkID=398382&clcid=0x409

24 CHAPTER 2 | Architecture

Managing data location

When you create an Azure storage account, you define in which Azure datacenter region you want

your storage to be located. By choosing one of the following storage redundancy options, you

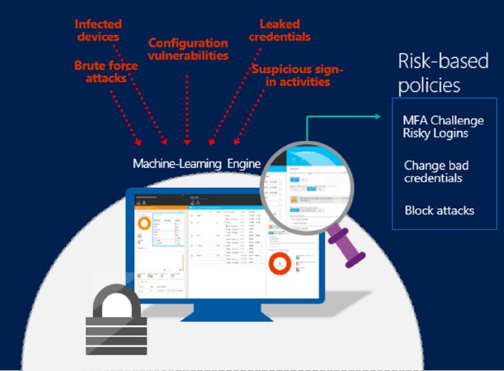

determine how often and to which location your data will be replicated: