CAT L1.4 BUSINESS MATHEMATICS Study Manual

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 529 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- BM CAT Study Manual Cover Contents and Syllabus RO'Neill 2 08 12

- BM CAT Study Manual Unit 1-3 RO'Neill 2 08 12

- BM CAT Study Manual Unit 4 RO'Neill 2 08 12

- BM CAT Study Manual Unit 5-6 RO'Neill 2 08 12

- BM CAT Study Manual Unit 7-8 RO'Neill 2 08 12

- BM CAT Study Manual Unit 9 RO'Neill 2 08 12

- Option 2

- Option 1

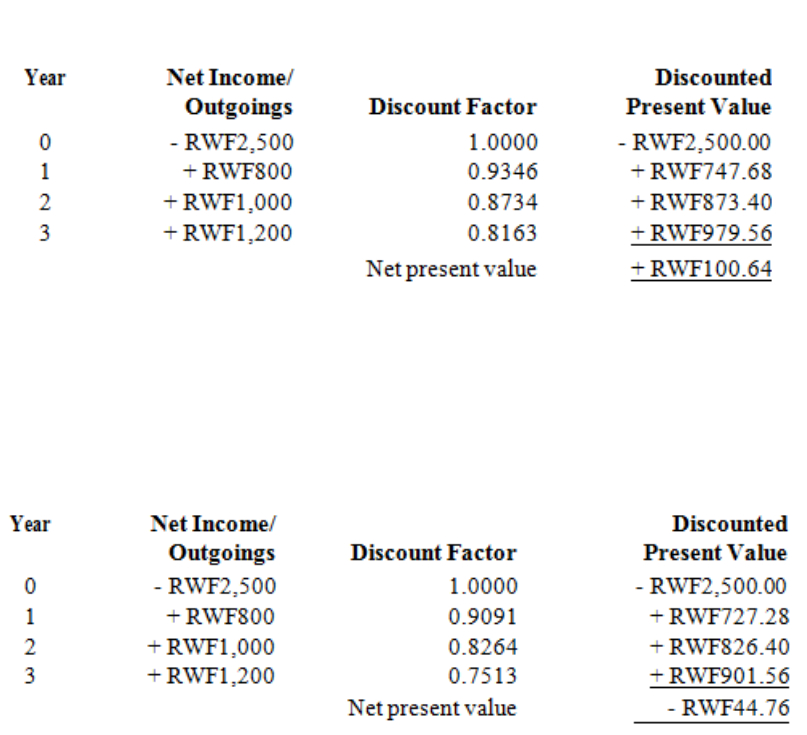

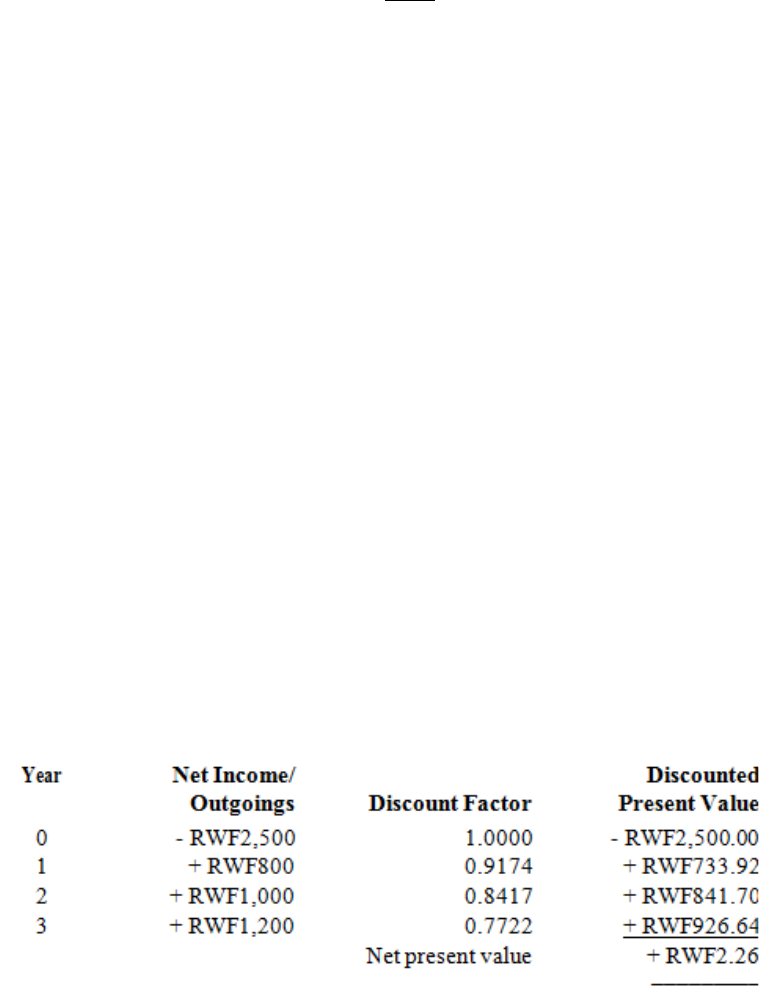

- You are receiving these returns and only investing RWF10000 so your Net Present Value is

- RWF10773.20 - RWF10000 = RWF773.20.

- Since the NPV is positive, you must be receiving more than 10% on the investment.

- What rate of return is the investment yielding?

- 11%, 12%, 18%??

- The rate of return the investment is yielding is called the Internal Rate of Return.

- This is perfect because it is a negative number which is roughly the same as the positive number done earlier.

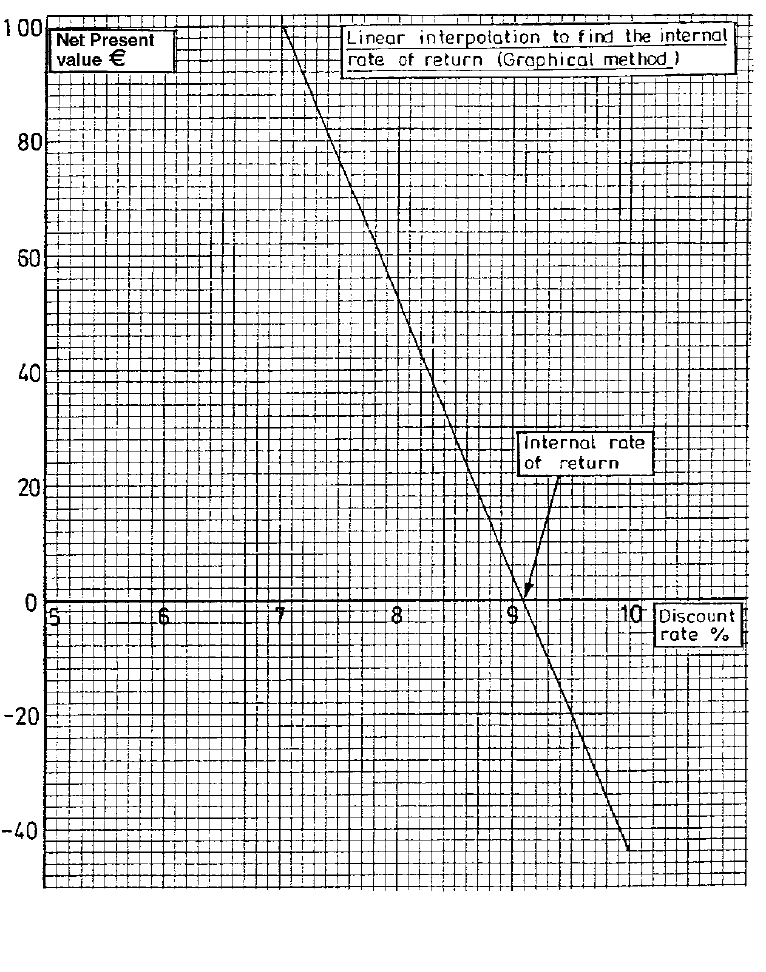

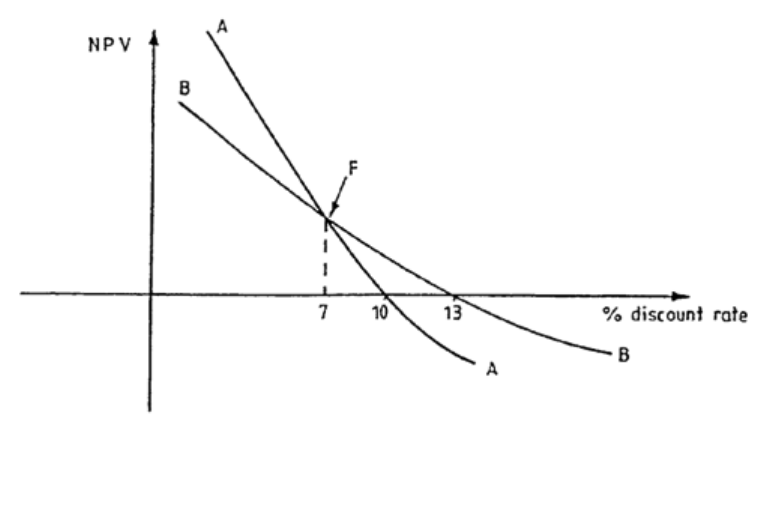

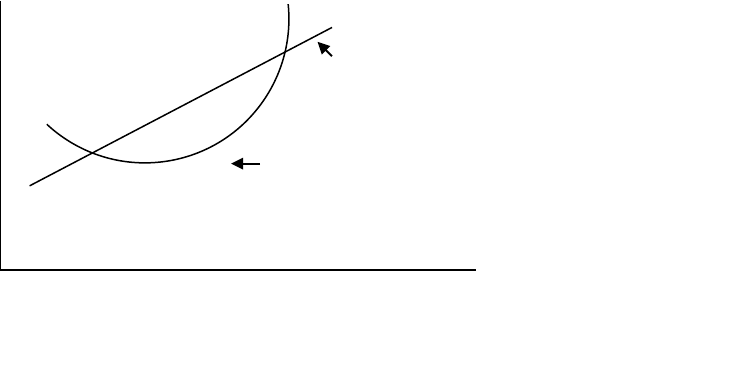

- The Internal Rate of Return is then estimated by drawing the following diagram:

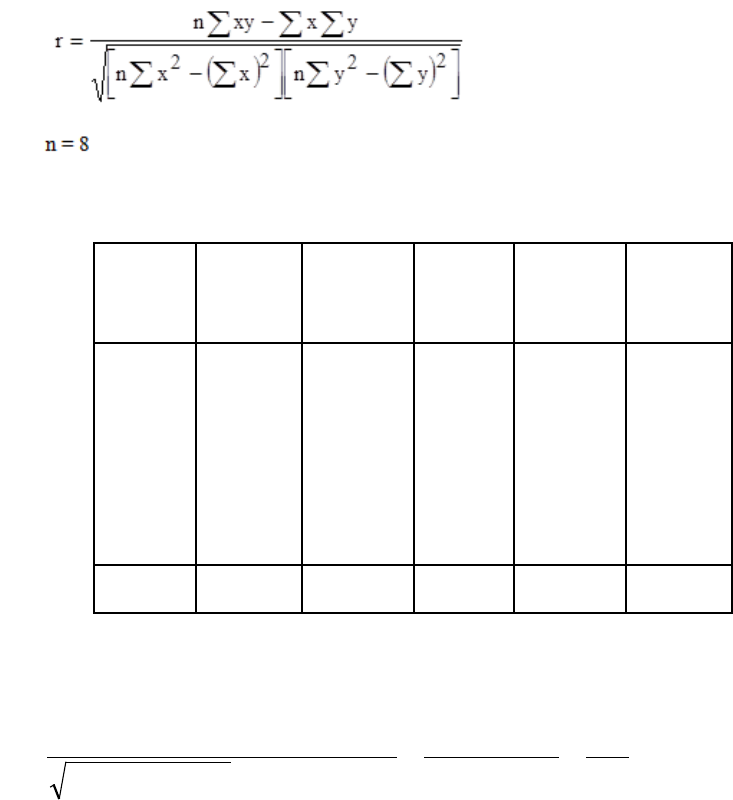

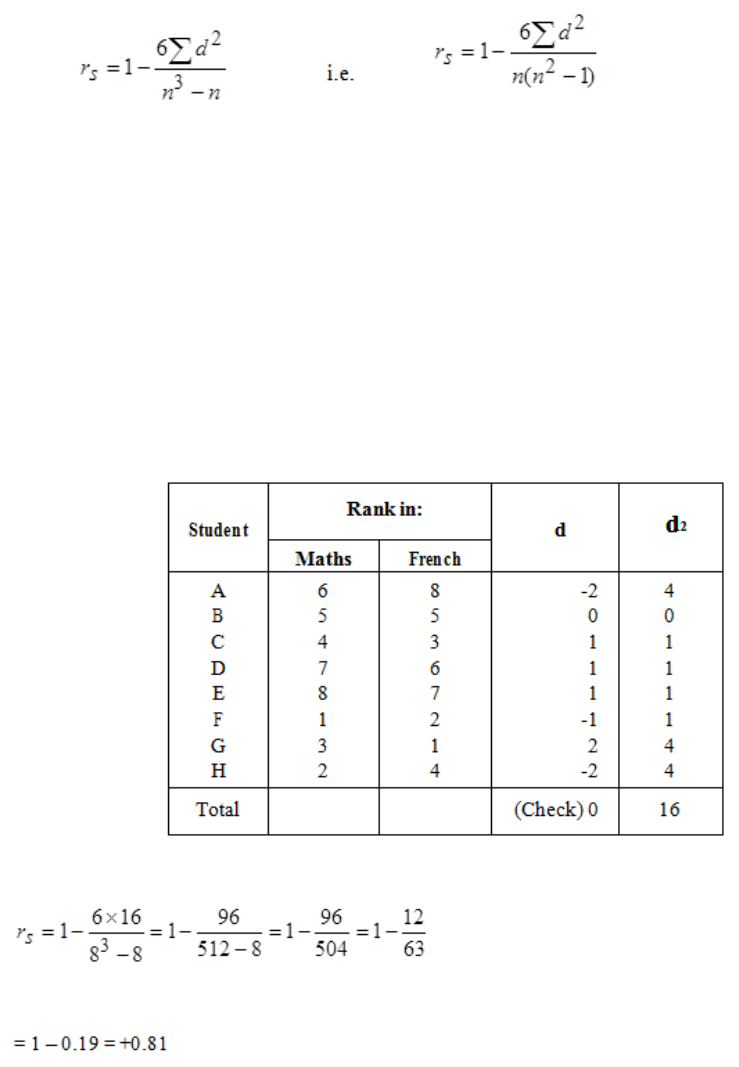

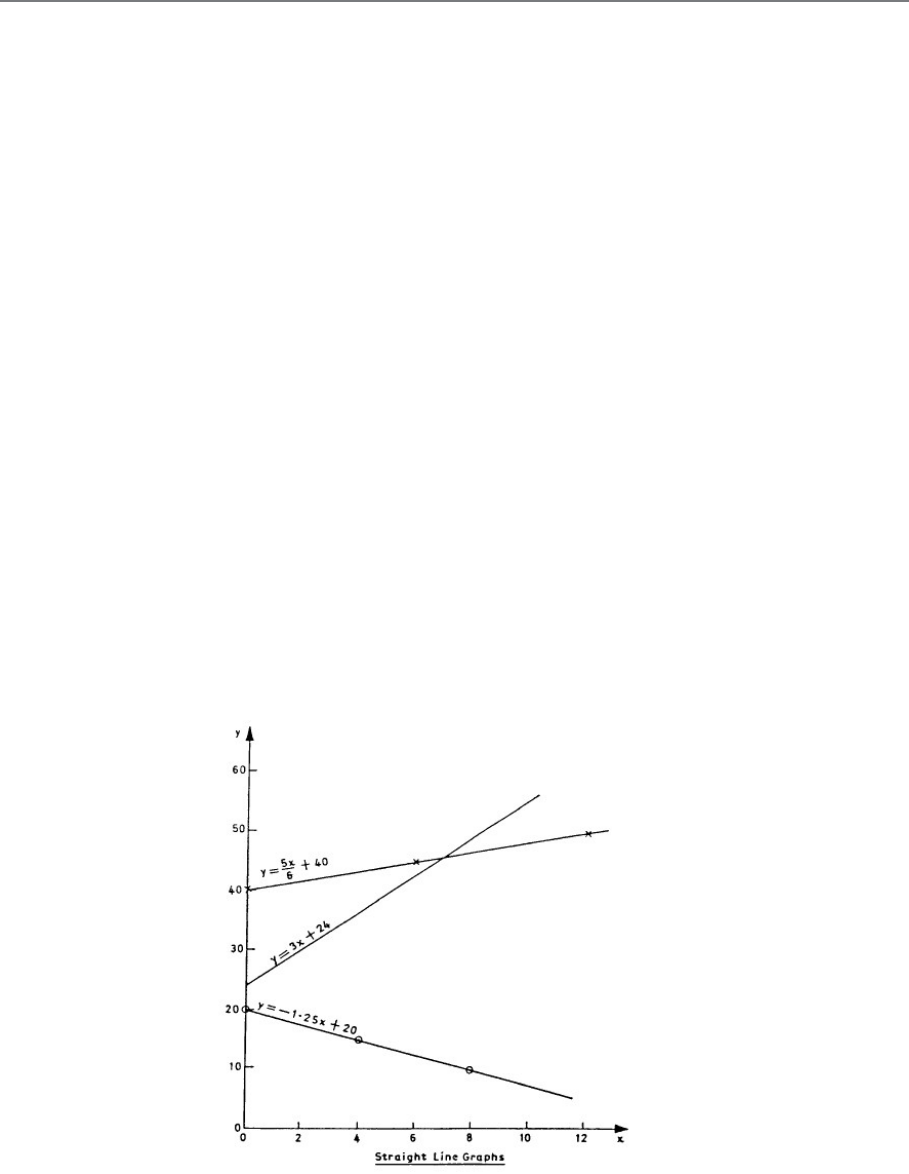

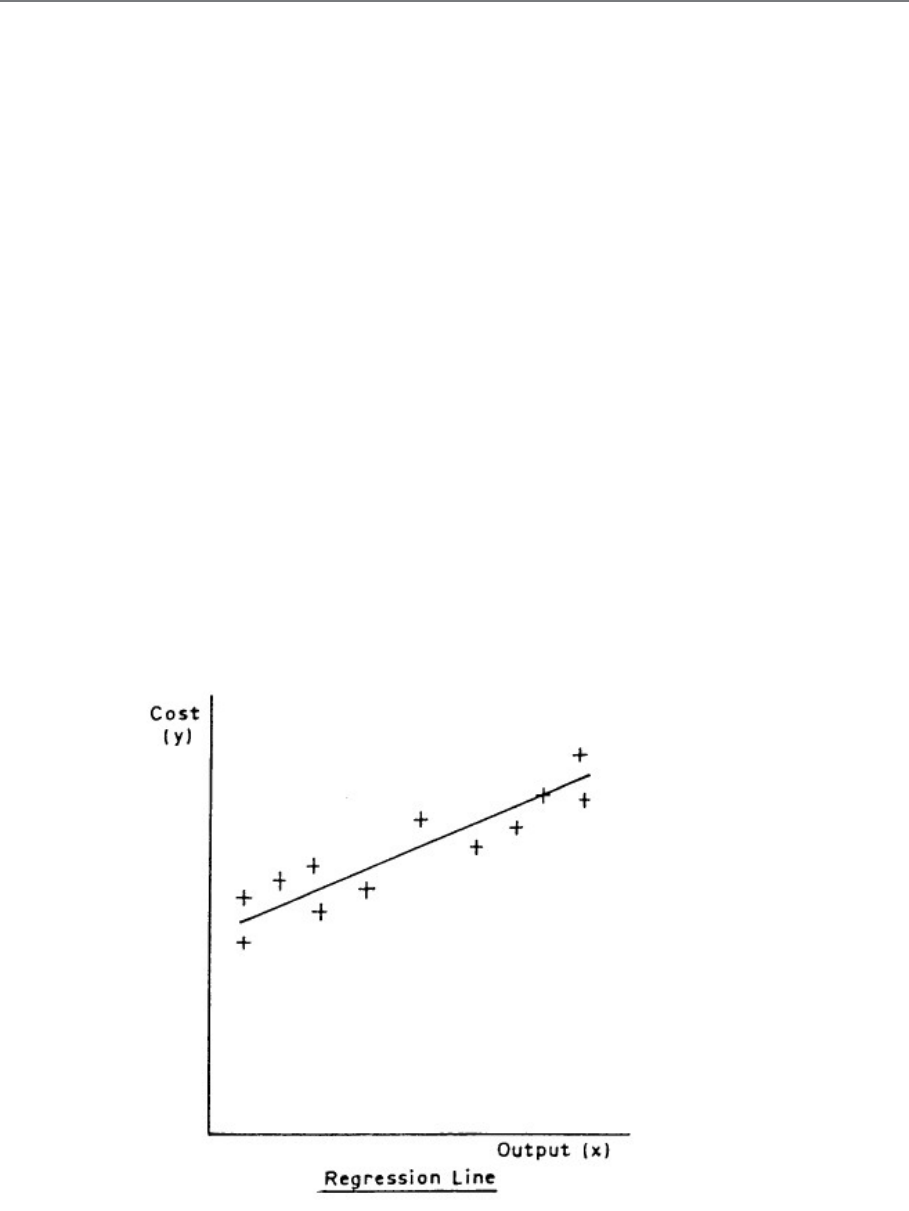

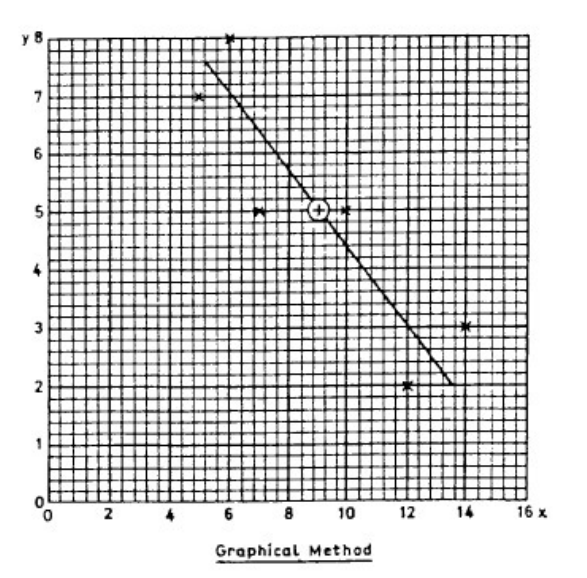

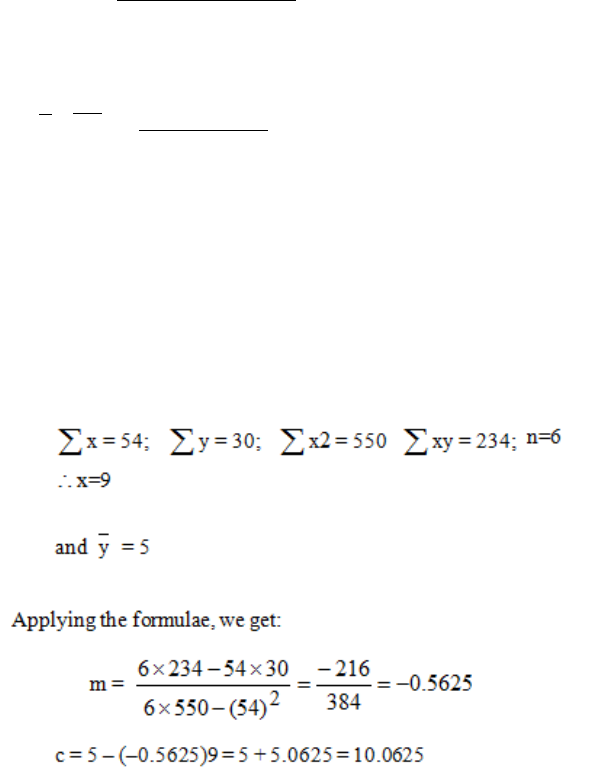

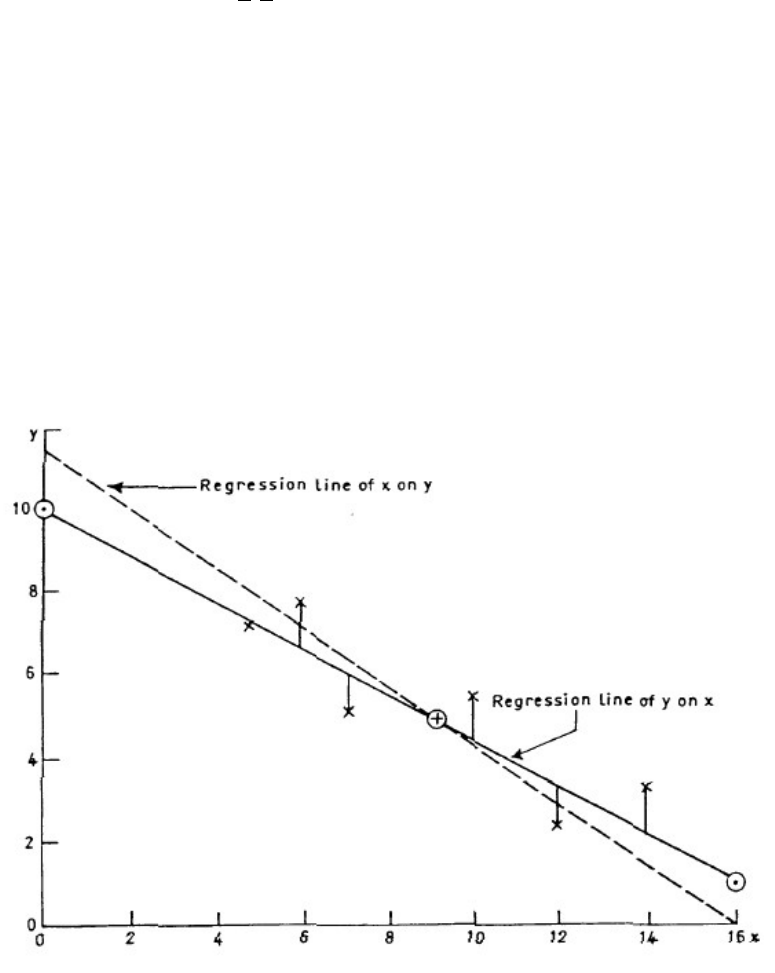

- Regression and Correlation

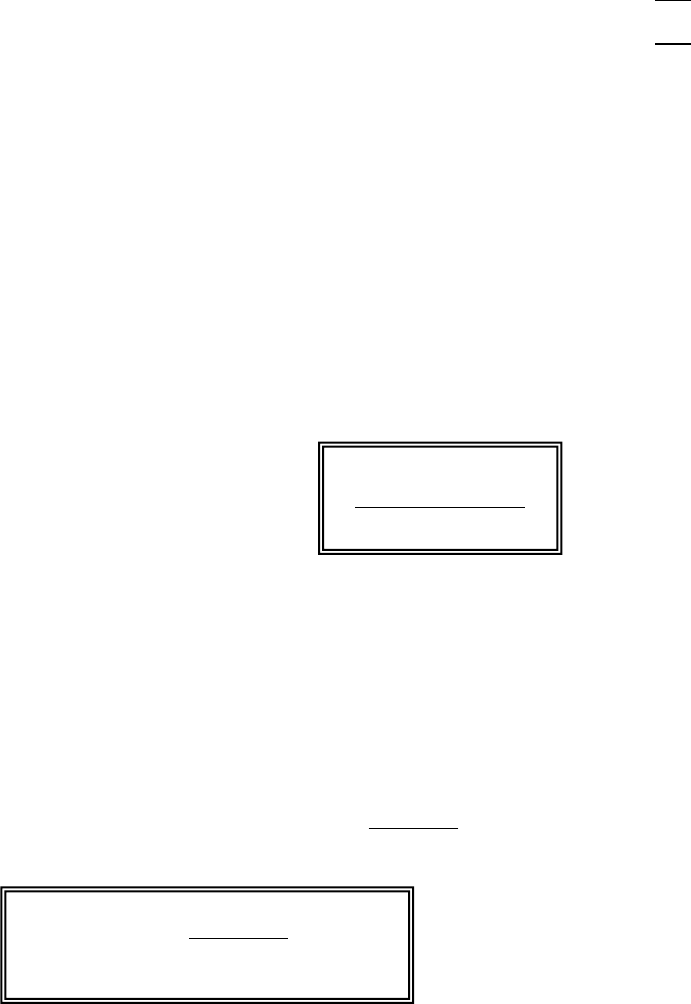

- Break-even Analysis

- Break-even Chart

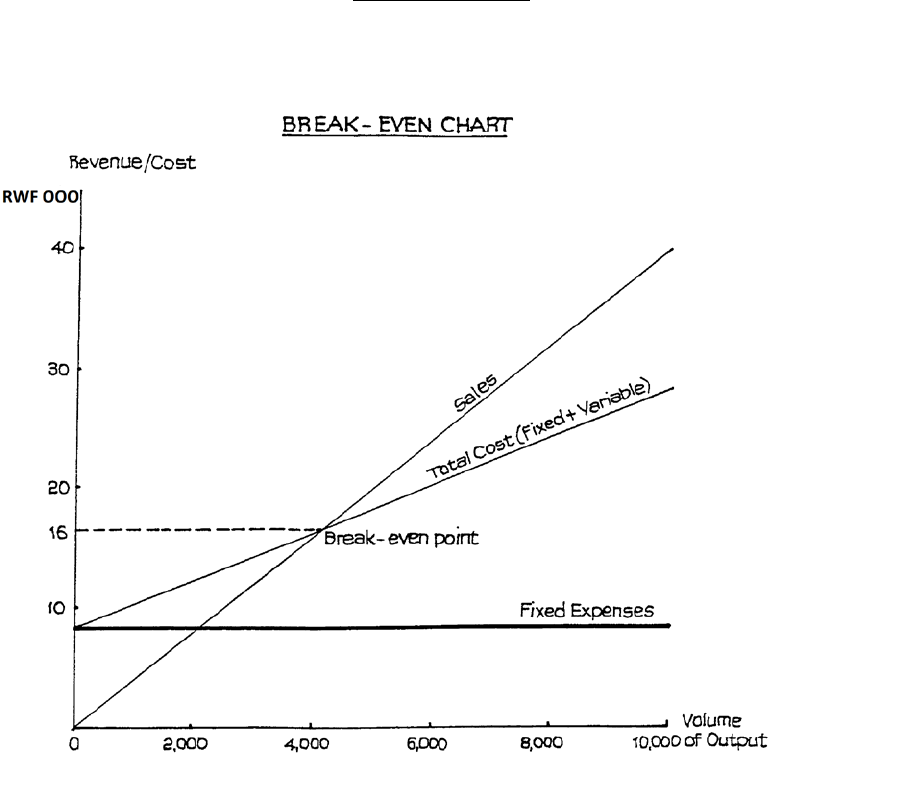

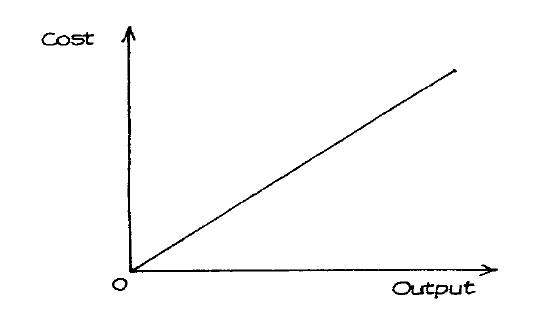

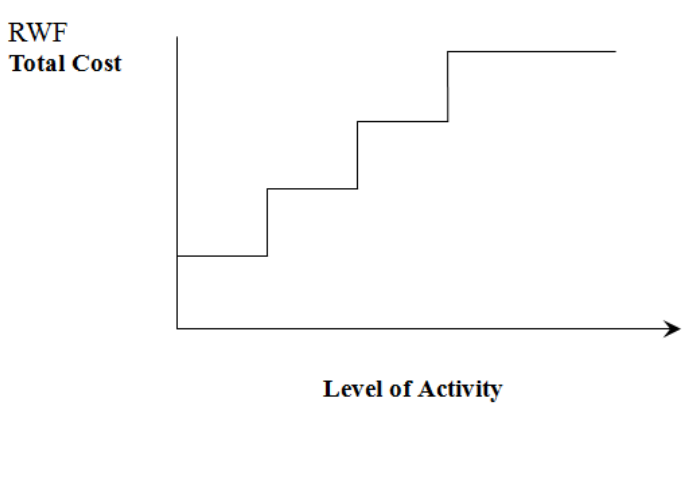

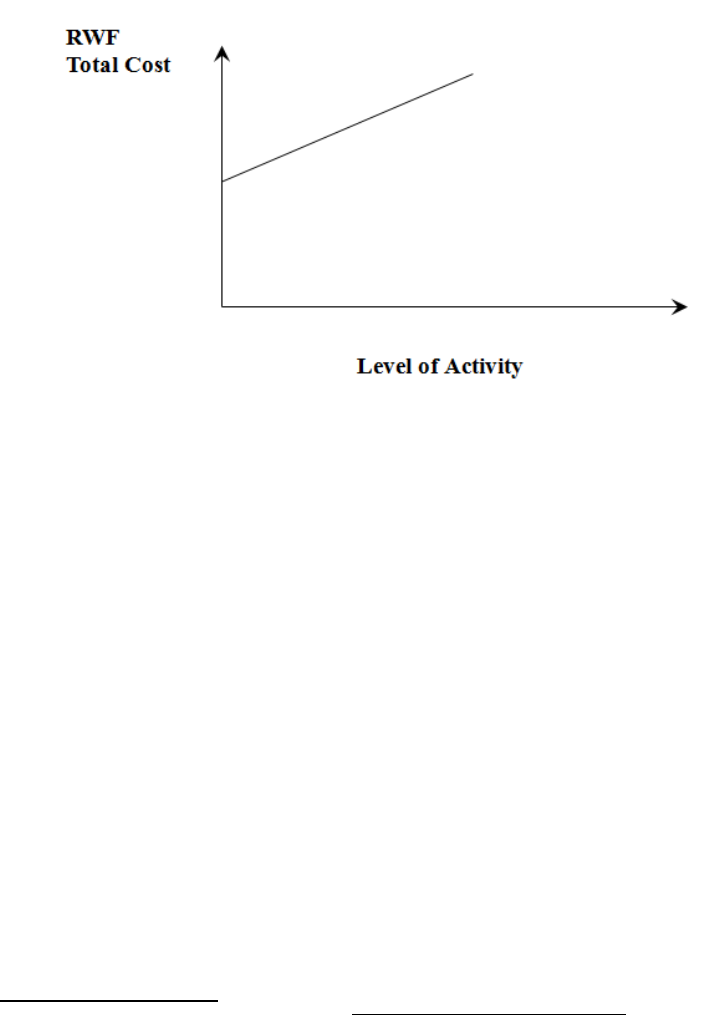

- Fixed, Variable and Marginal Costs

- Introduction

- BM CAT Study Manual Unit 10 RO'Neill 2 08 12

- BM CAT Study Manual Unit 11 RO'Neill 2 08 12

- BM CAT Study Manual Unit 12-13 RO'Neill 2 08 12

- BM CAT Study Manual Unit 14 RO'Neill 2 08 12

- BM CAT Study Manual Unit 15 RO'Neill 2 08 12

- BM CAT Study Manual Unit 16 RO'Neill 2 08 12

INSIDE COVER – BLANK

Page 1

© iCPAR

All rights reserved.

The text of this publication, or any part thereof, may not be reproduced or transmitted in any form

or by any means, electronic or mechanical, including photocopying, recording, storage in an

information retrieval system, or otherwise, without prior permission of the publisher.

Whilst every effort has been made to ensure that the contents of this book are accurate, no

responsibility for loss occasioned to any person acting or refraining from action as a result of any

material in this publication can be accepted by the publisher or authors. In addition to this, the

authors and publishers accept no legal responsibility or liability for any errors or omissions in

relation to the contents of this book.

INSTITUTE OF

CERTIFIED PUBLIC ACCOUNTANTS

OF

RWANDA

Level 1

L1.4 BUSINESS MATHEMATICS

First Edition 2012

This study manual has been fully revised and updated

in accordance with the current syllabus.

It has been developed in consultation with experienced lecturers.

Page 2

BLANK

Page 3

CONTENTS

Study

Unit

Title Page

Introduction to the Course

1: PROBABILITY

11

Estimating Probabilities

13

Types of Event

17

The Two Laws of Probability

19

Tree Diagrams

29

Binomial Distribution

39

Poisson Distribution

41

Venn diagrams

43

2: COLLECTION OF DATA

45

Collection of Data

47

Types of Data

49

Requirements of Statistical Data

51

Methods of Collecting Data

53

Interviewing

57

Designing the Questionnaire

59

Choice of Method

65

Pareto Distribution and the “80:20” Rule

67

3: TABULATION & GROUPING OF DATA

69

Introduction to Classification & Tabulation of Data

71

Forms of Tabulation

75

Secondary Statistical Tabulation

79

Rules for Tabulation

81

Sources of Data & Presentation Methods

85

4: GRAPHICAL REPRESENTATION OF INFORMATION

93

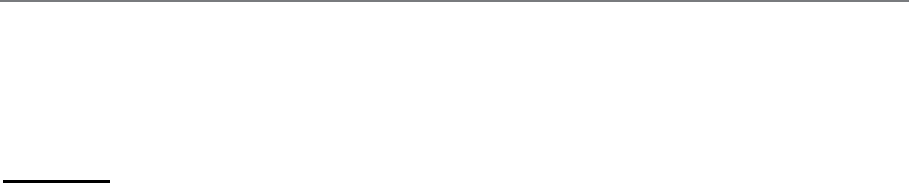

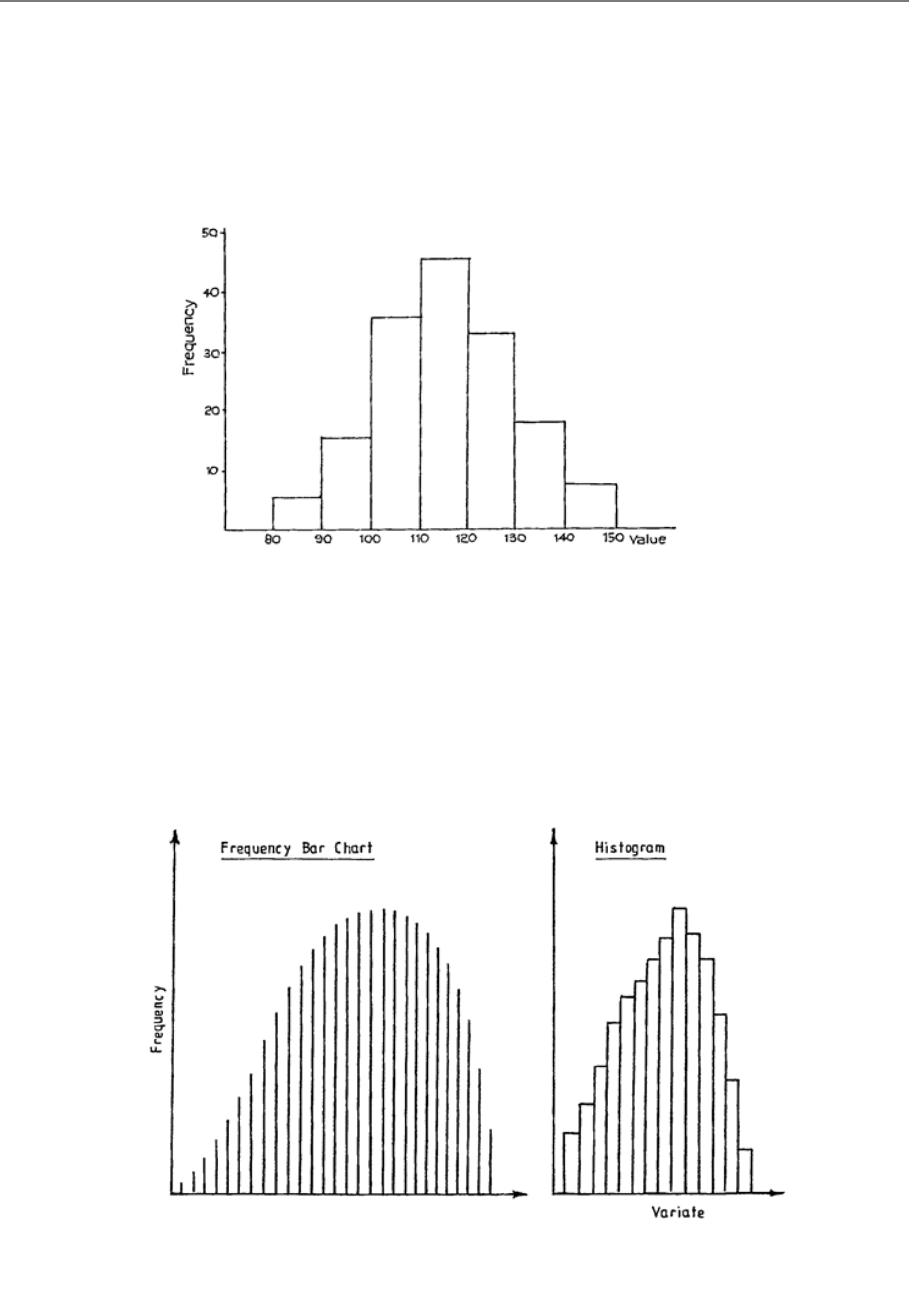

Introduction to Frequency Distributions

95

Preparation of Frequency Distributions

97

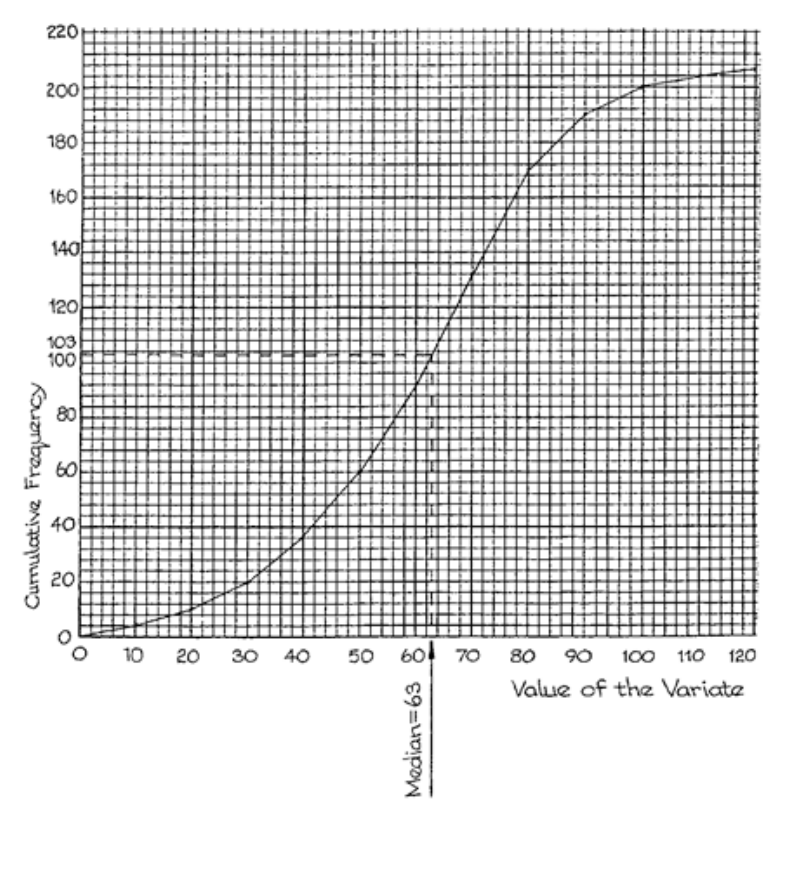

Cumulative Frequency Distributions

103

Relative Frequency Distributions

105

Graphical Representation of Frequency Distributions

107

Introduction to Other Types of Data Presentation

117

Pictograms

119

Pie Charts

123

Bar Charts

125

General Rules for Graphical Presentation

129

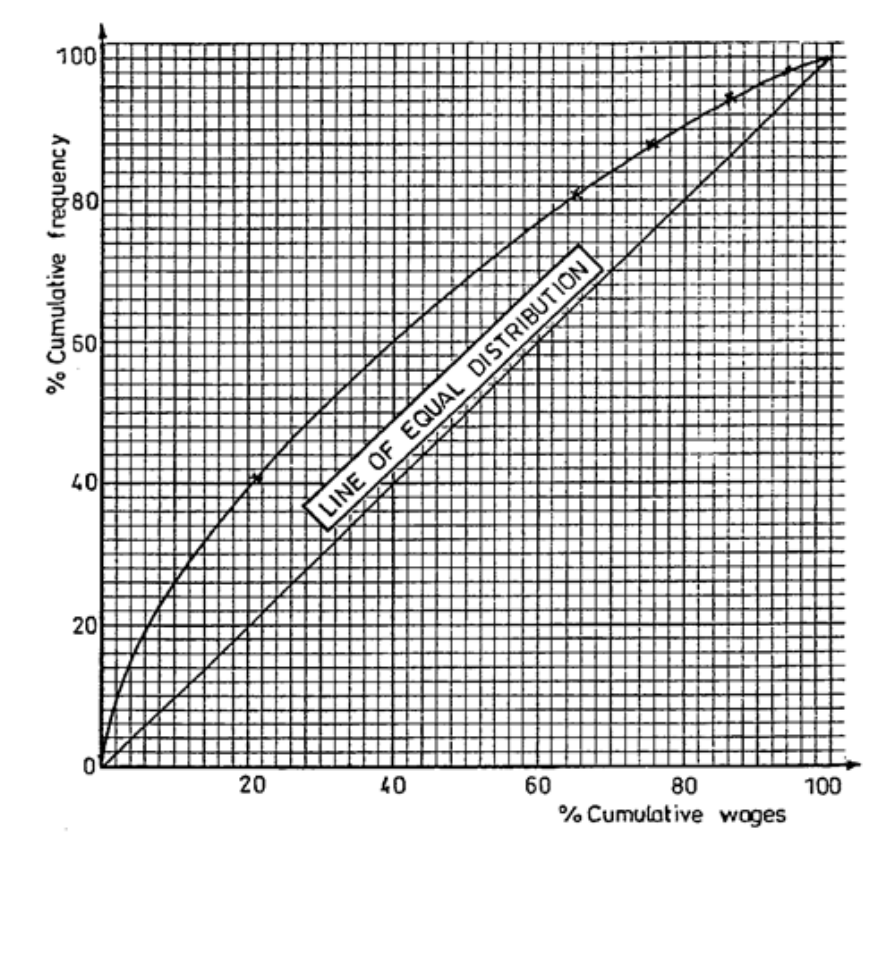

The Lorenz Curve

131

Page 4

Study

Unit

Title Page

5: AVERAGES OR MEASURES OF LOCATION

137

The Need for Measures of Location

139

The Arithmetic Mean

141

The Mode

153

The Median

159

6: MEASURES OF DISPERSION

165

Introduction to Dispersion

167

The Range

169

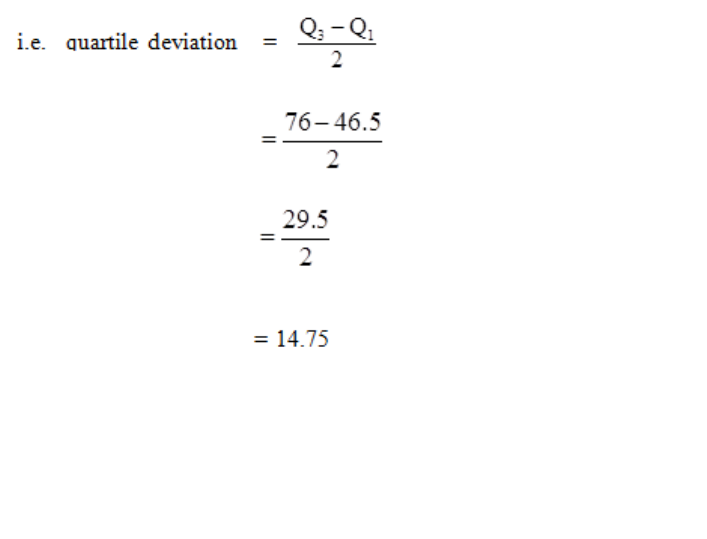

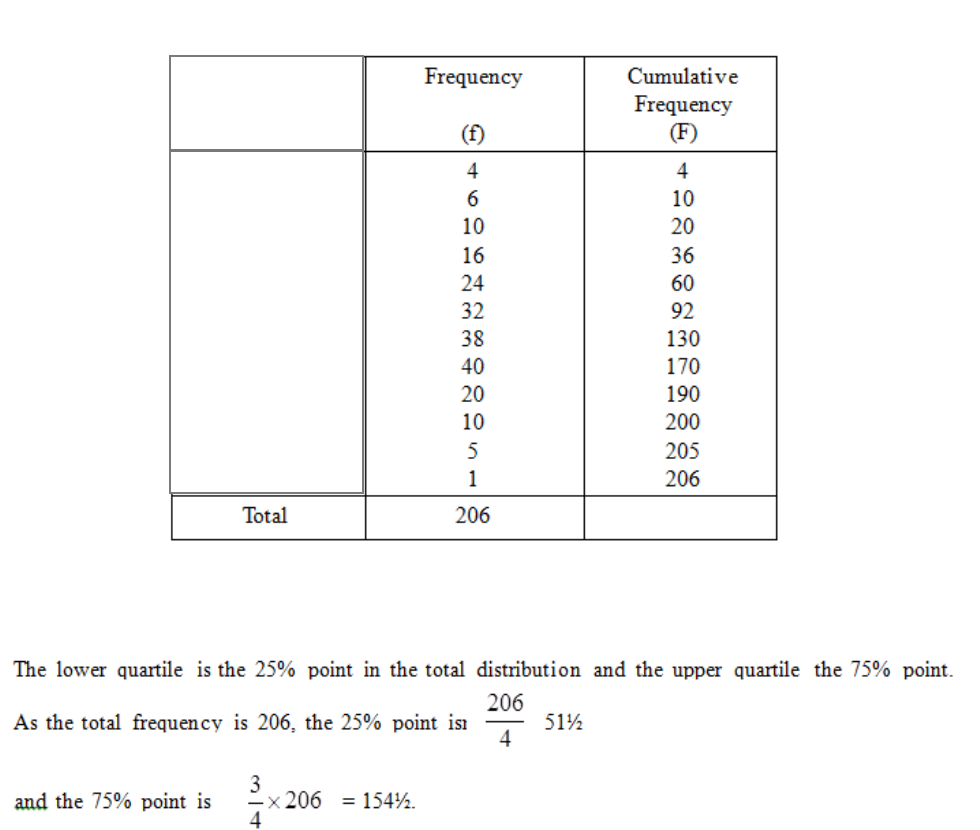

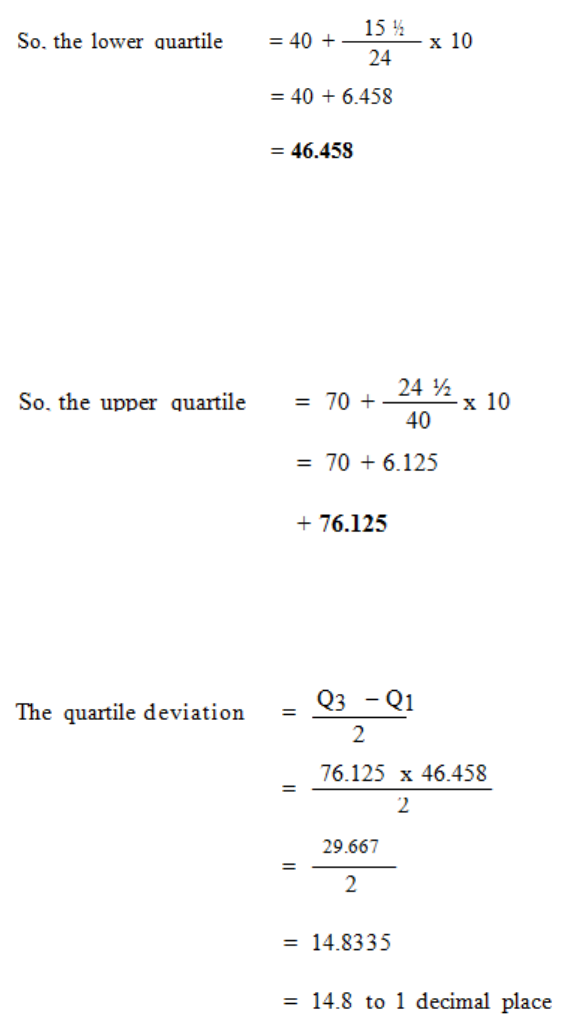

The Quartile Deviation, Deciles and Percentiles

171

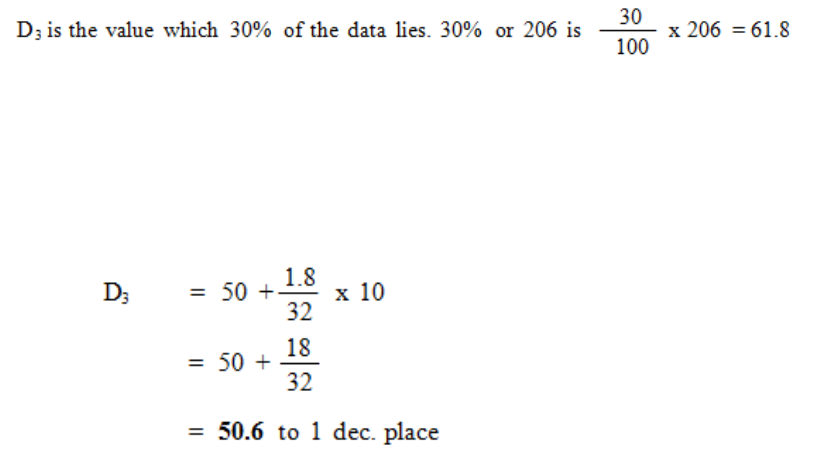

The Standard Deviation

177

The Coefficient of Variation

183

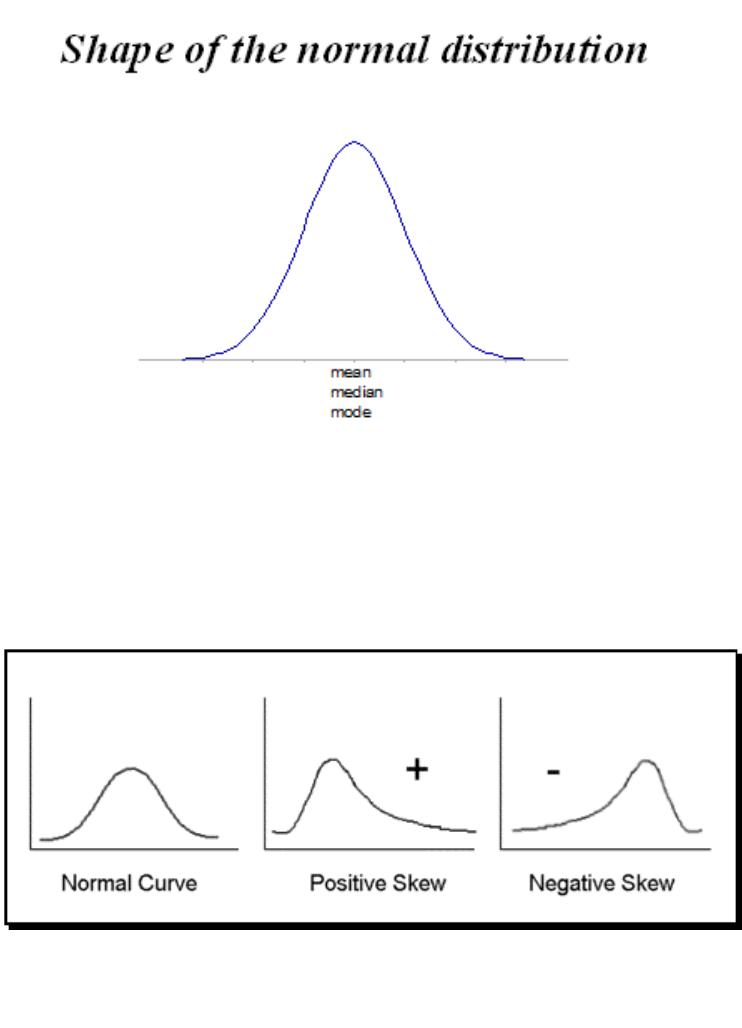

Skewness

185

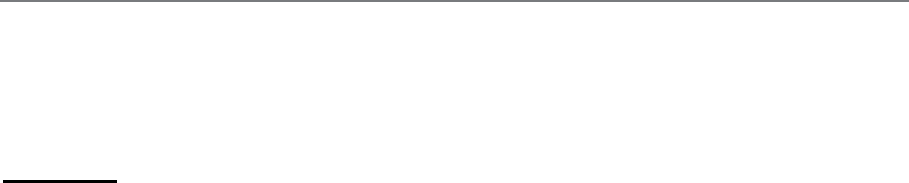

Averages & Measures of Dispersion

189

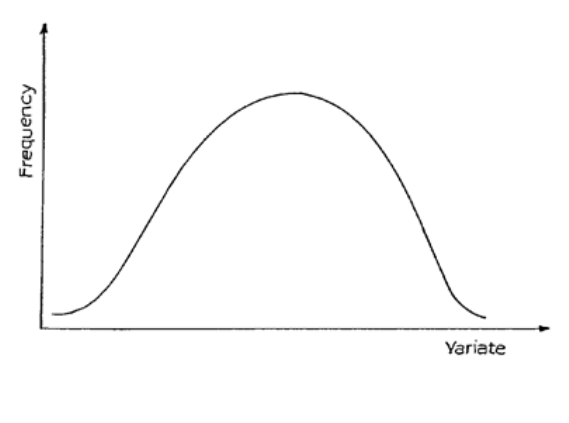

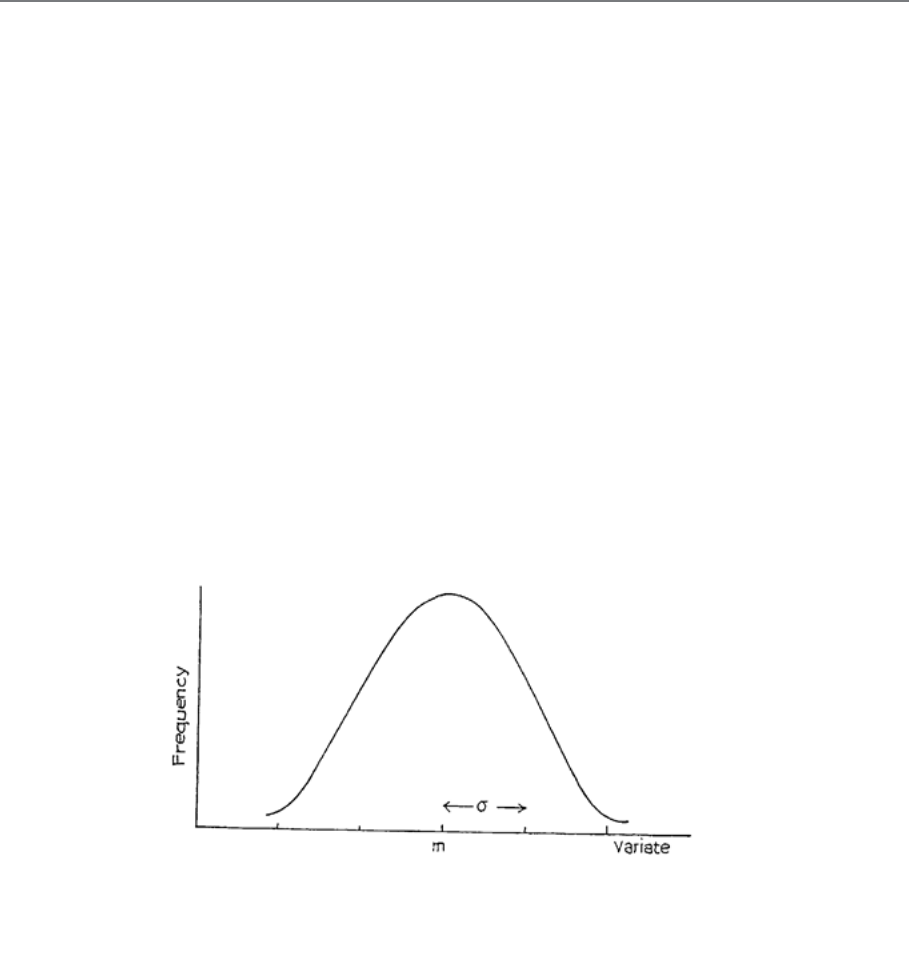

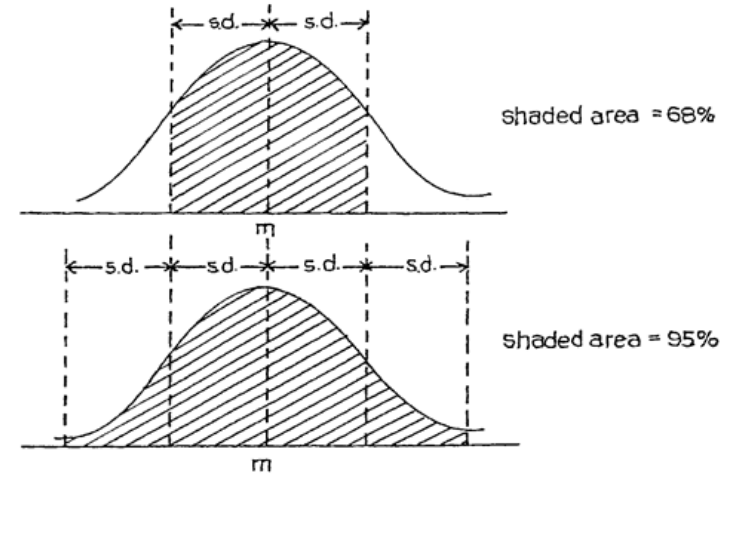

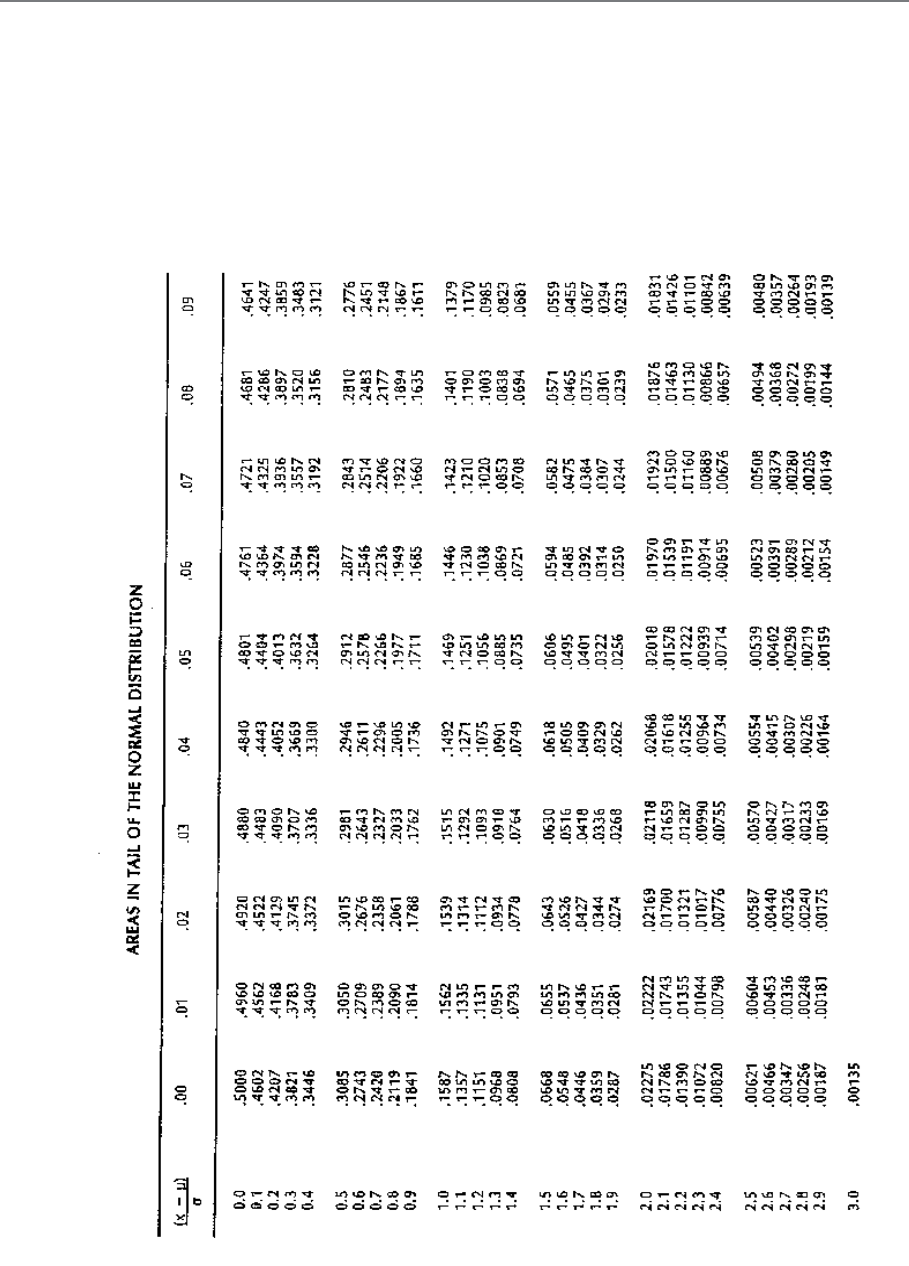

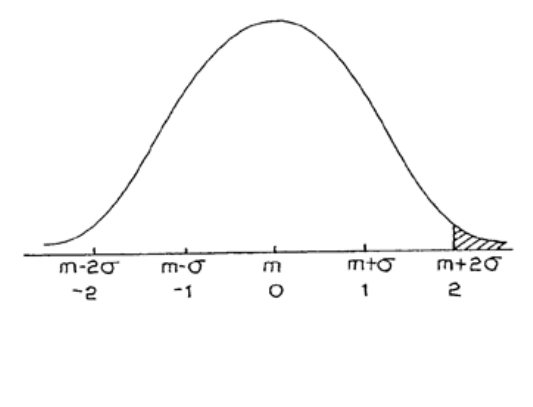

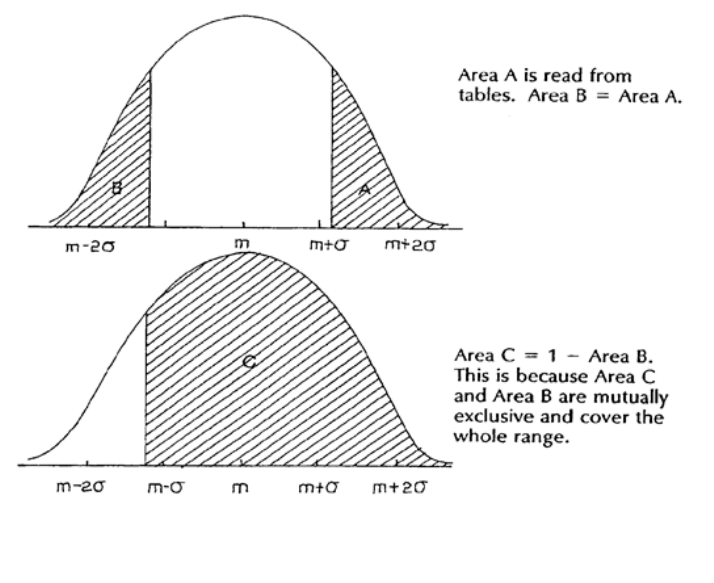

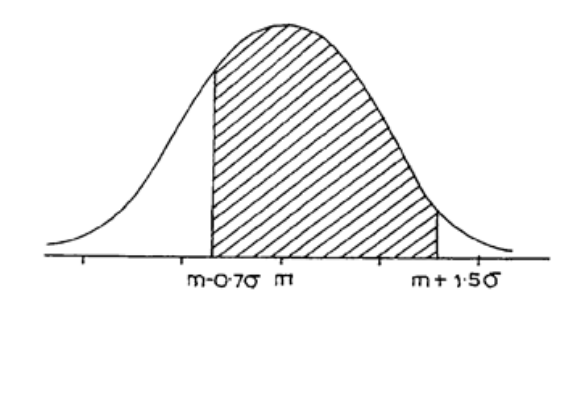

7: THE NORMAL DISTRIBUTION

203

Introduction

205

The Normal Distribution

207

Calculations Using Tables of the Normal Distribution

209

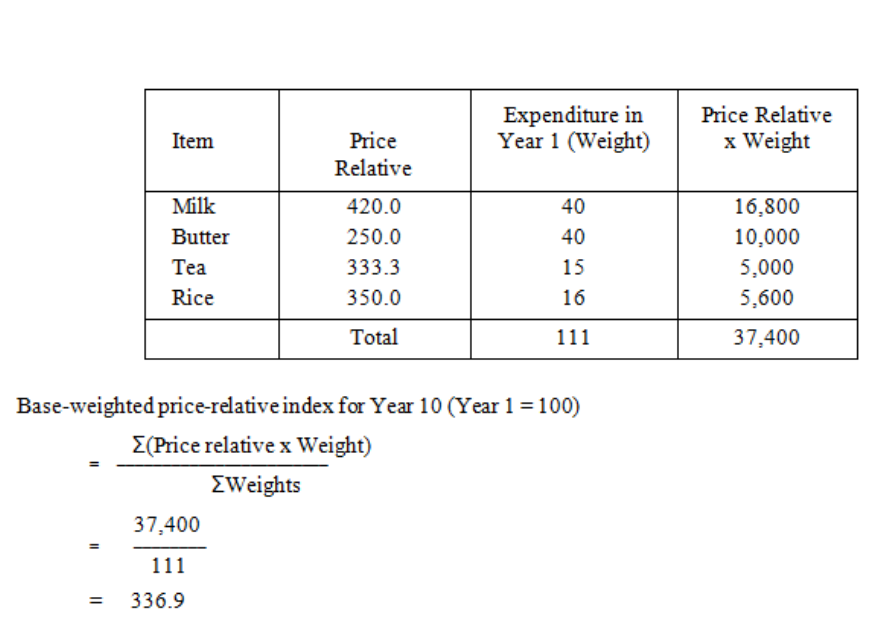

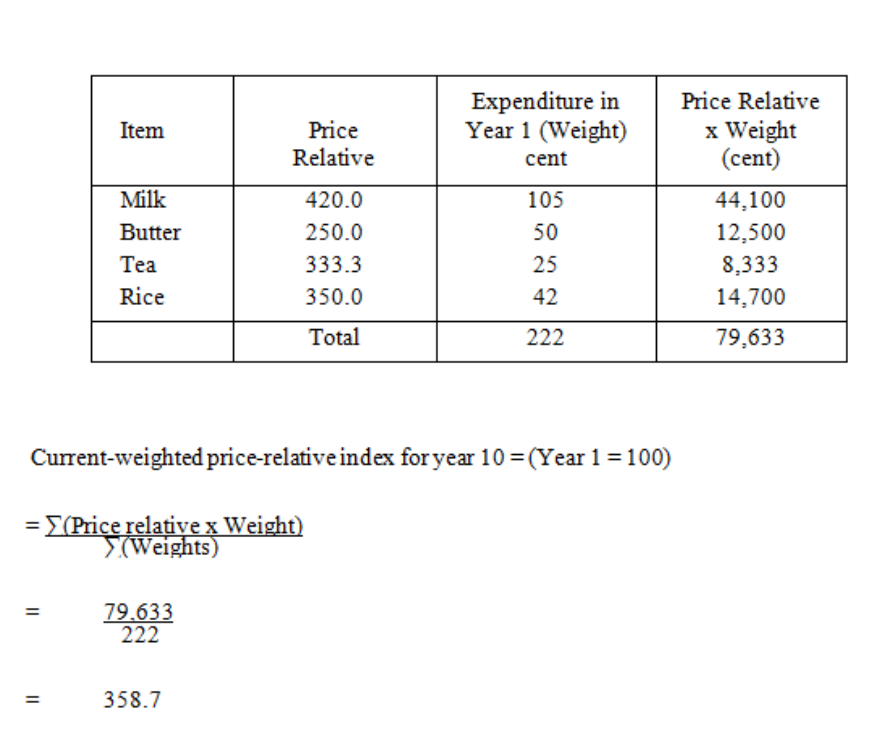

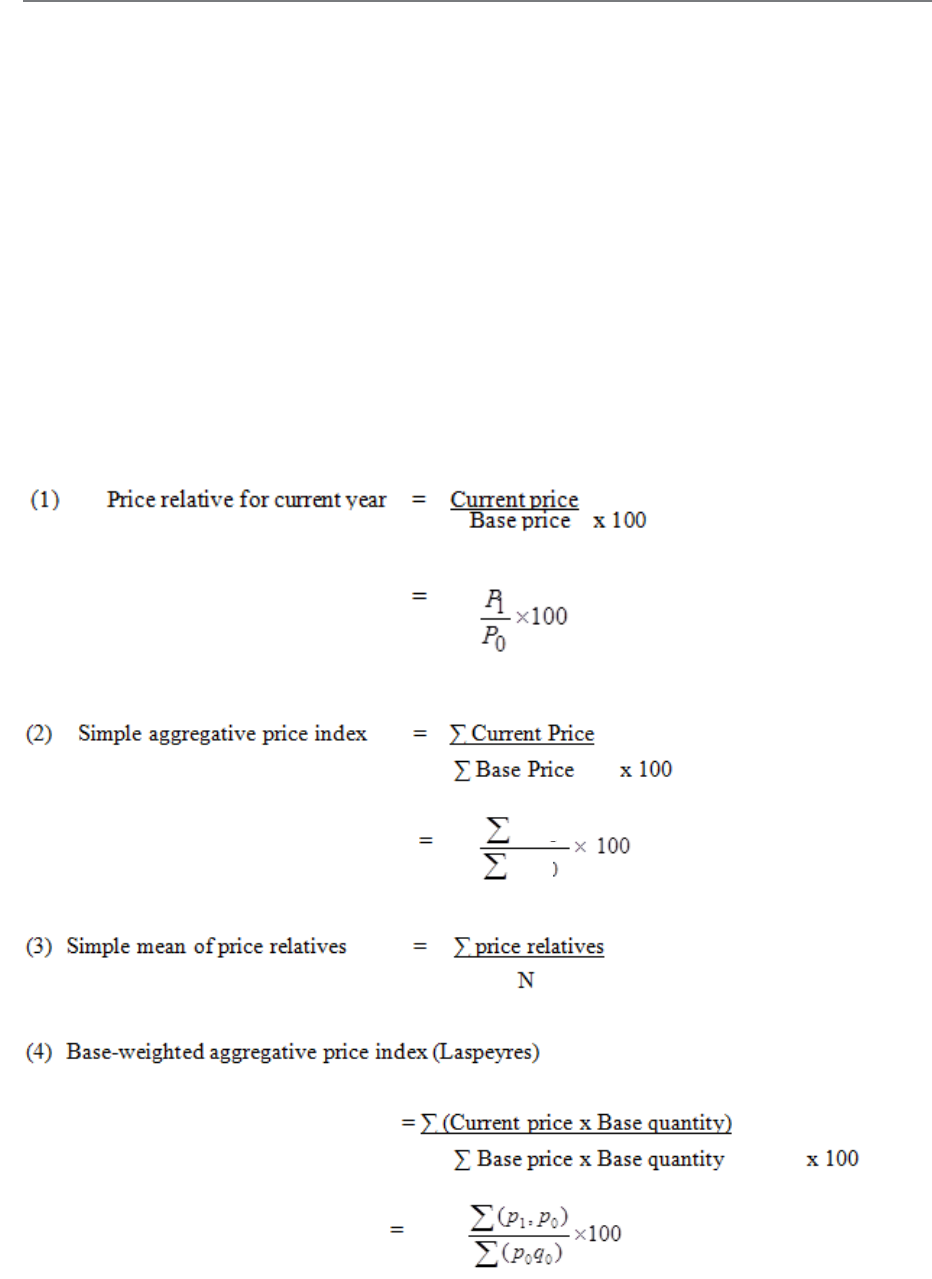

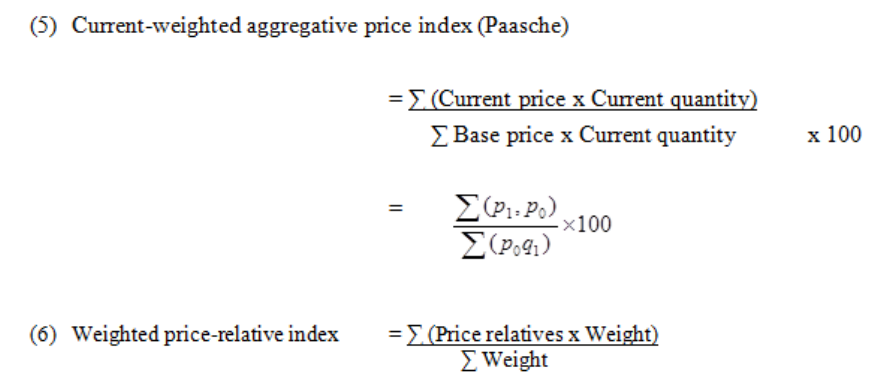

8: INDEX NUMBERS

215

The Basic Idea

217

Building Up an Index Number

219

Weighted Index Numbers

223

Formulae

229

Quantity or Volume Index Numbers

231

The Chain-Base Method

237

Deflation of Time Series

239

9: PERCENTAGES & RATIOS, SIMPLE & COMPOUND INTEREST,

DISCOUNTED CASH FLOW

245

Percentages

247

Ratios

249

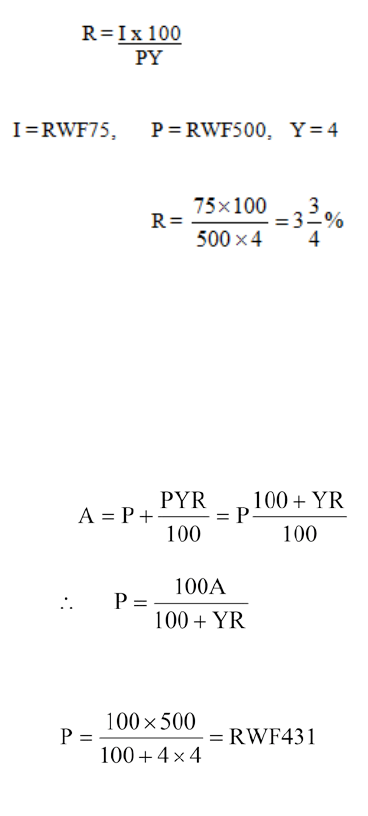

Simple Interest

253

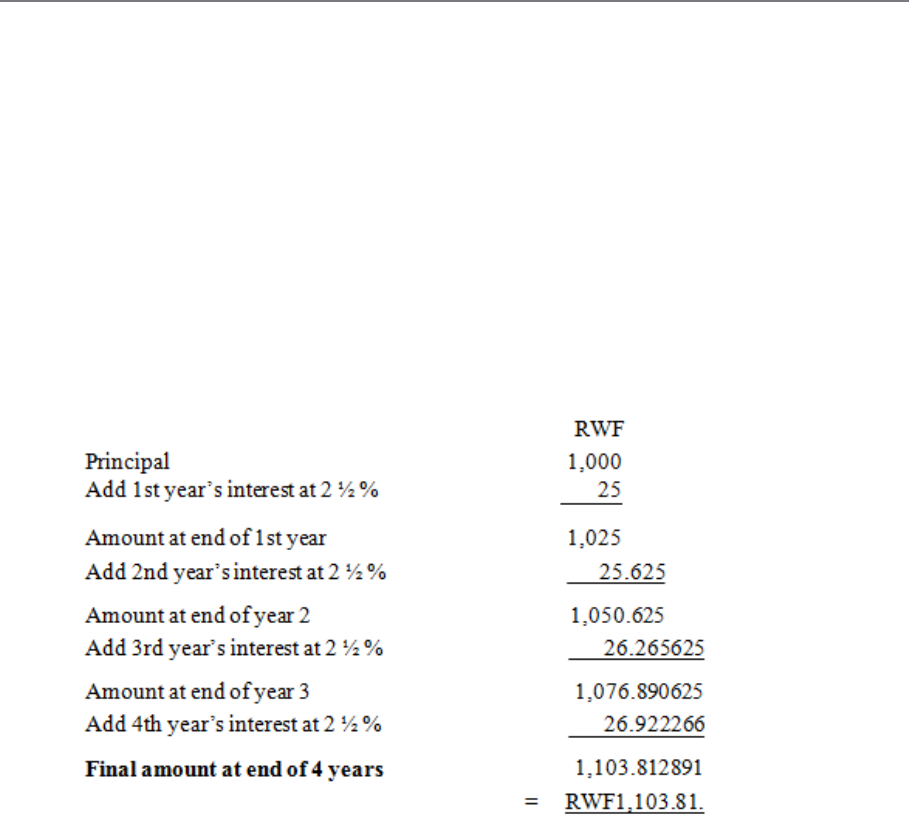

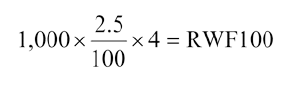

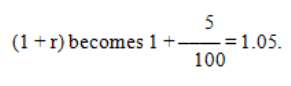

Compound Interest

257

Introduction to Discounted Cash Flow Problems

263

Two Basic DCF Methods

273

Introduction to Financial Mathematics

283

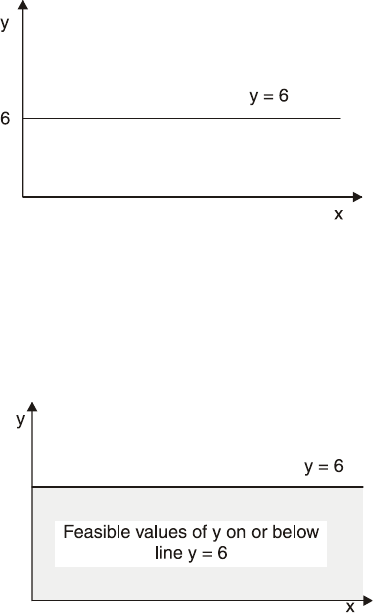

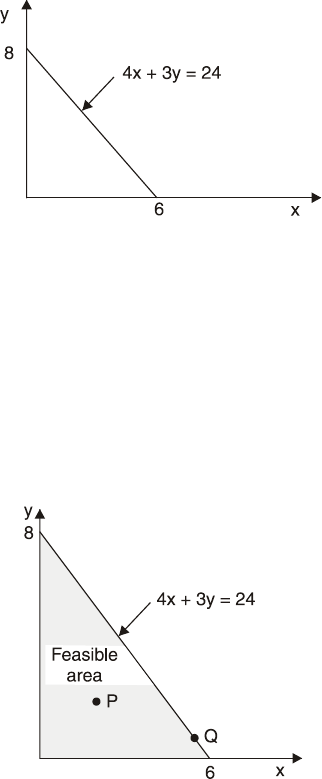

Manipulation of Inequalities

325

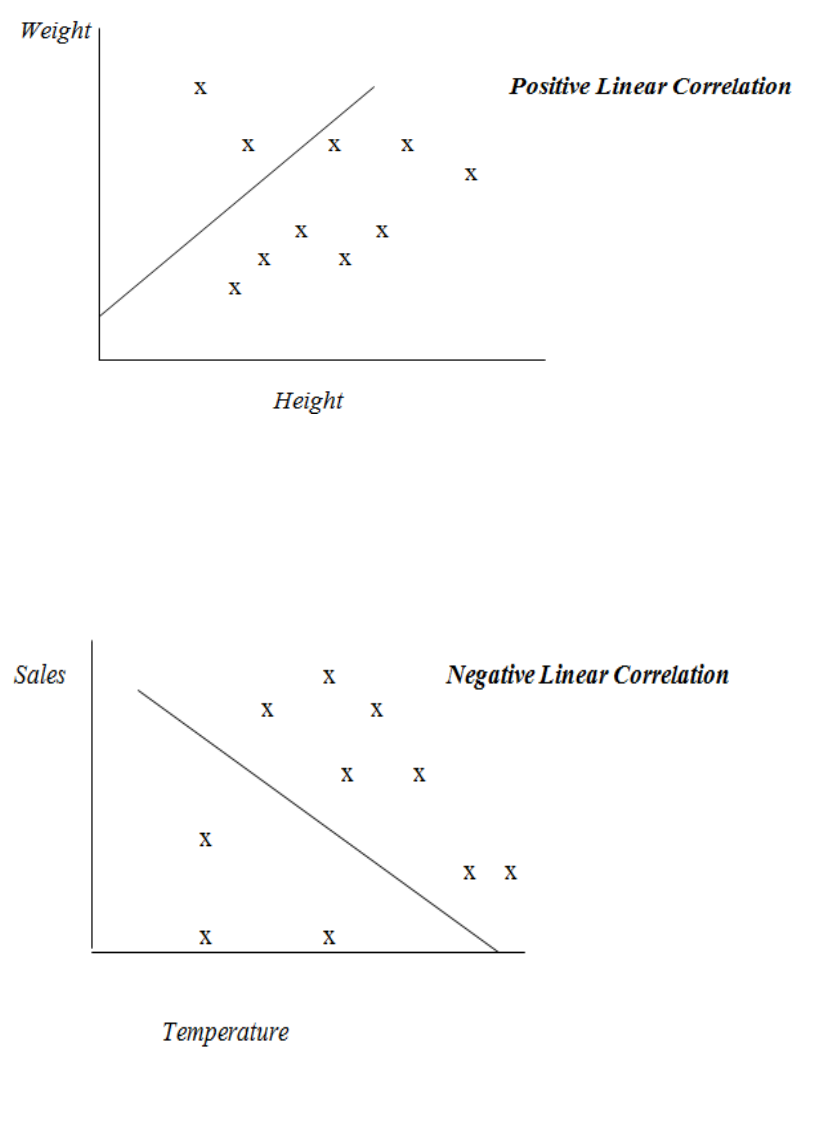

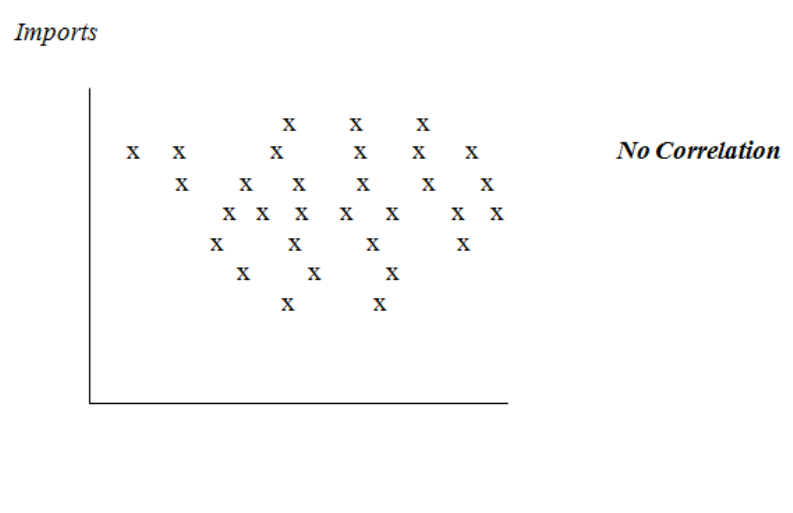

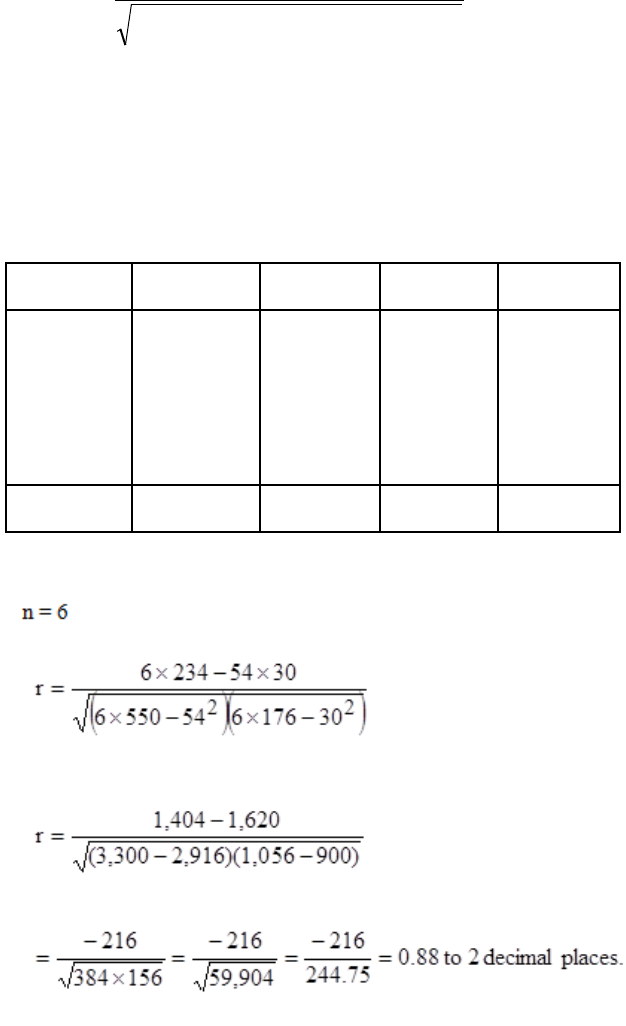

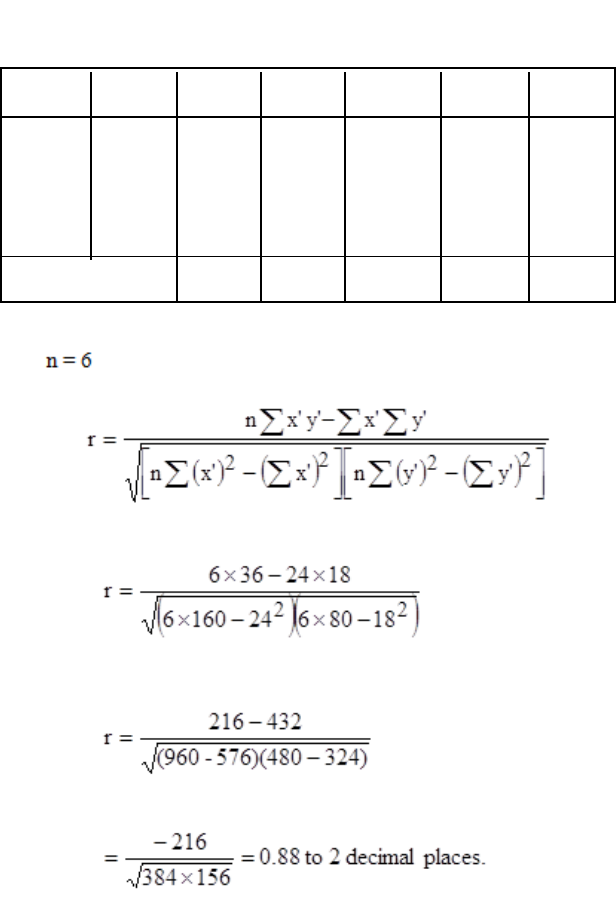

10: CORRELATION

327

General

329

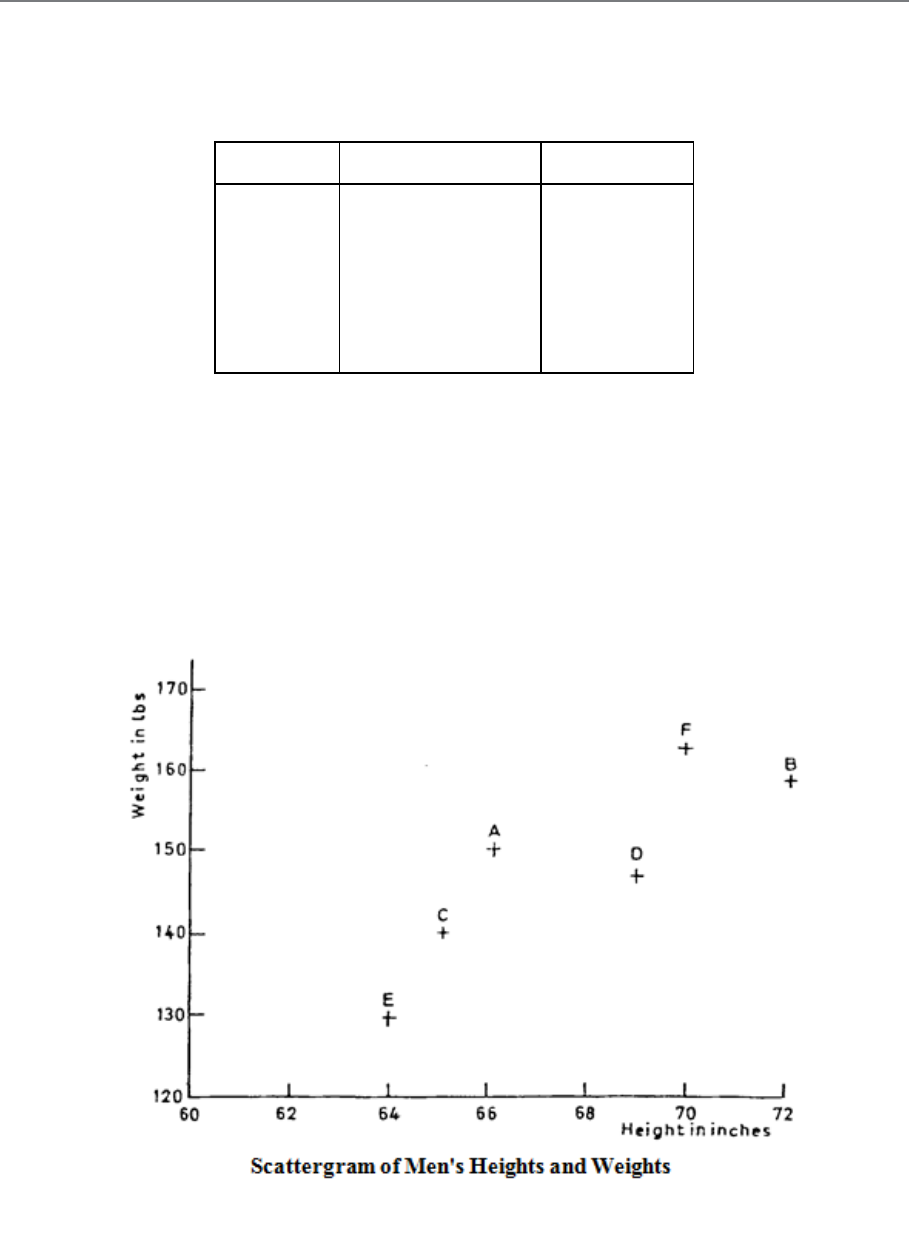

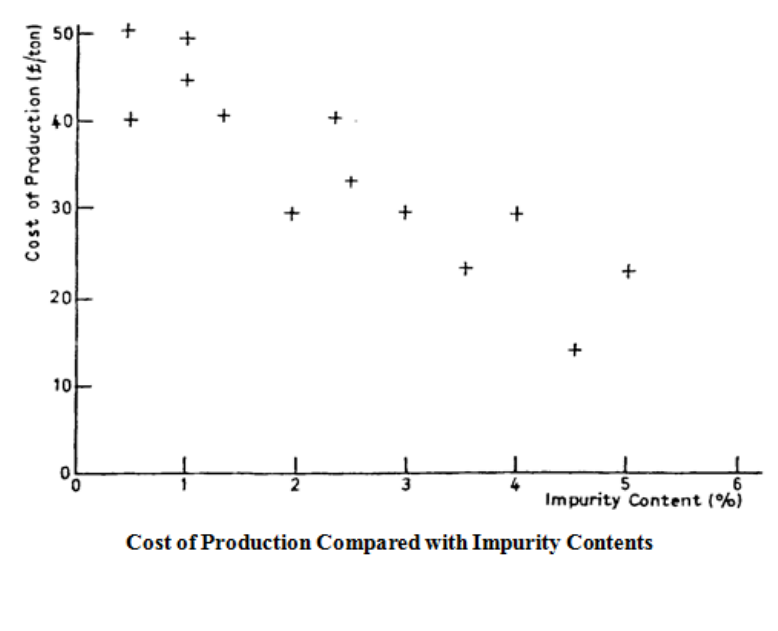

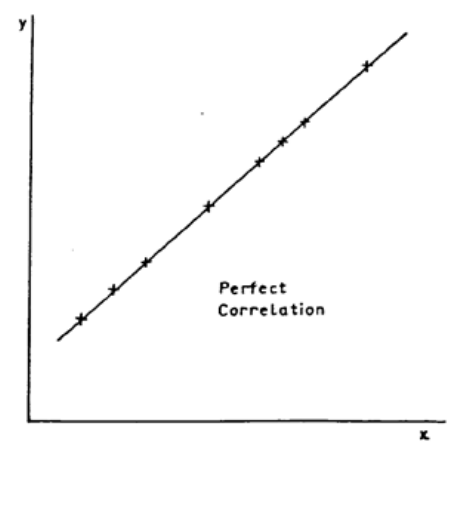

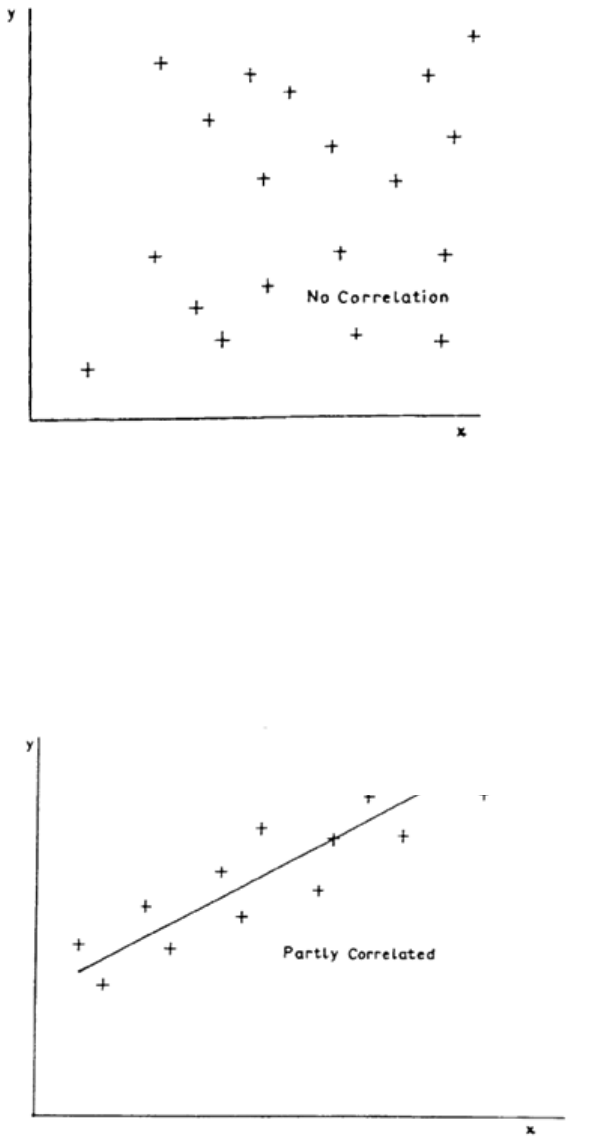

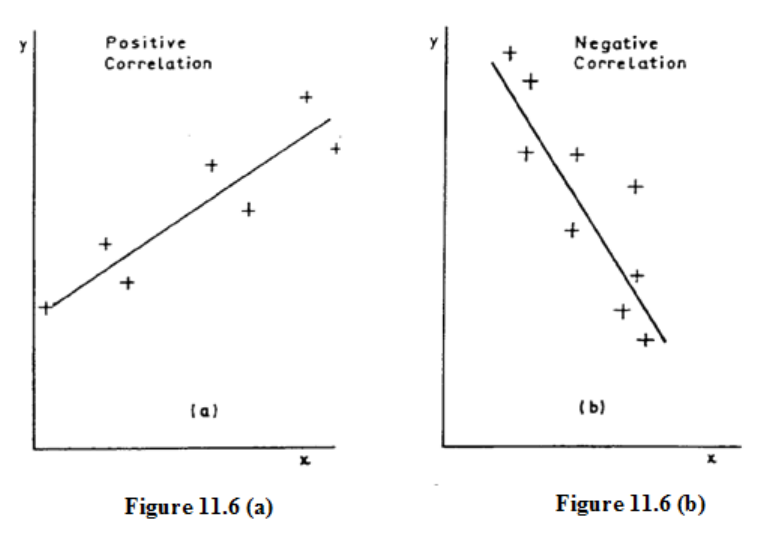

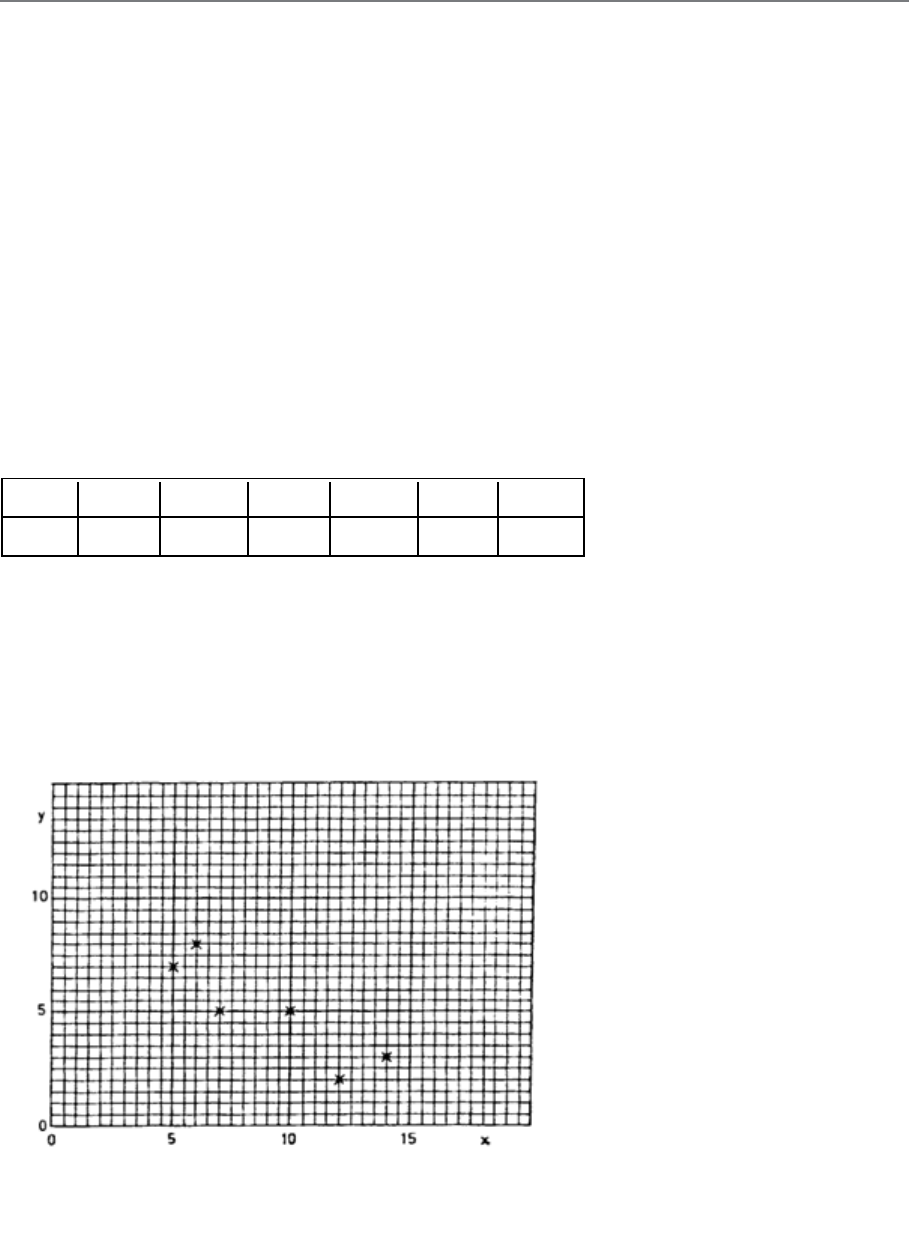

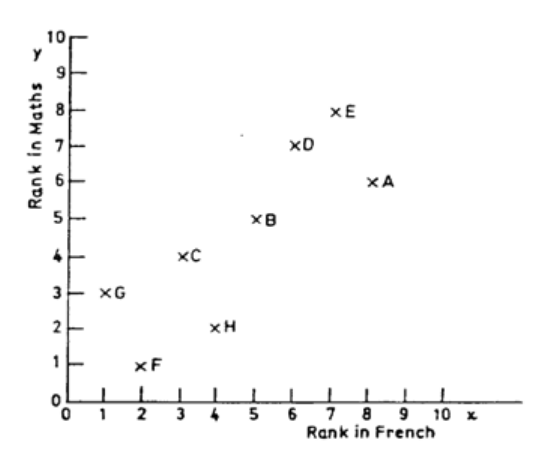

Scatter Diagrams

331

The Correlation Coefficient

337

Rank Correlation

343

11: LINEAR REGRESSION

351

Introduction

353

Regression Lines

355

Use of Regression

361

Connection between Correlation and Regression

363

Page 5

Study

Unit

Title Page

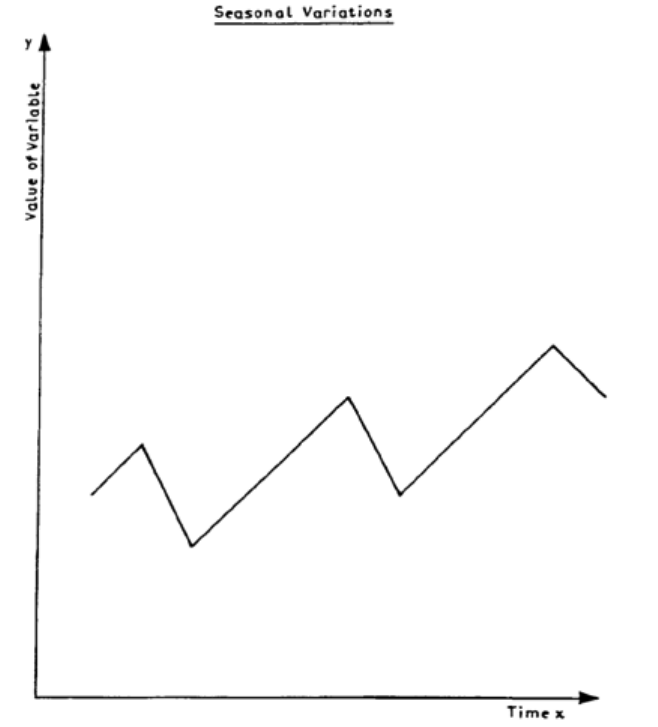

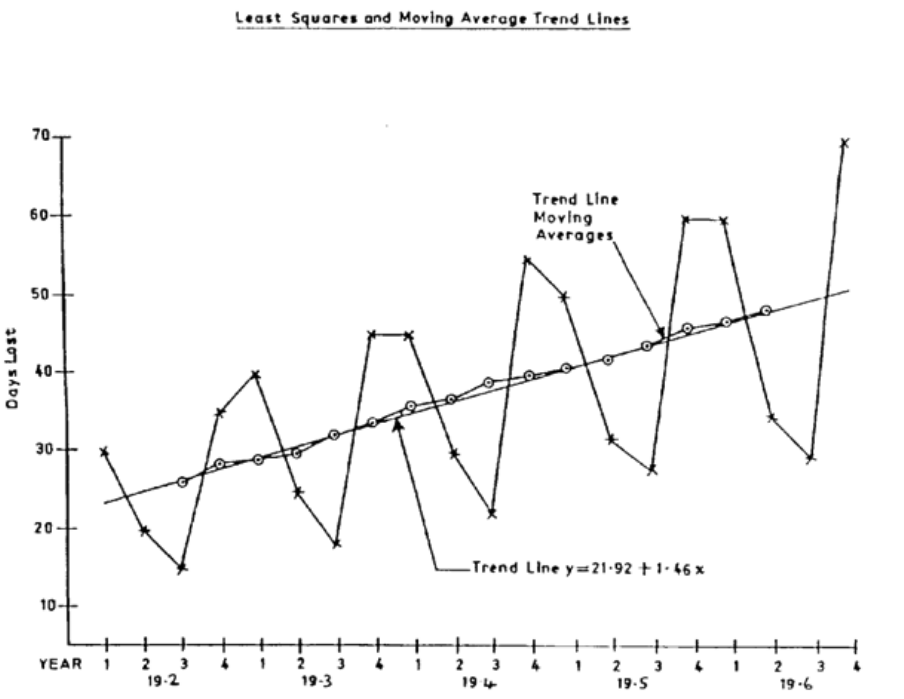

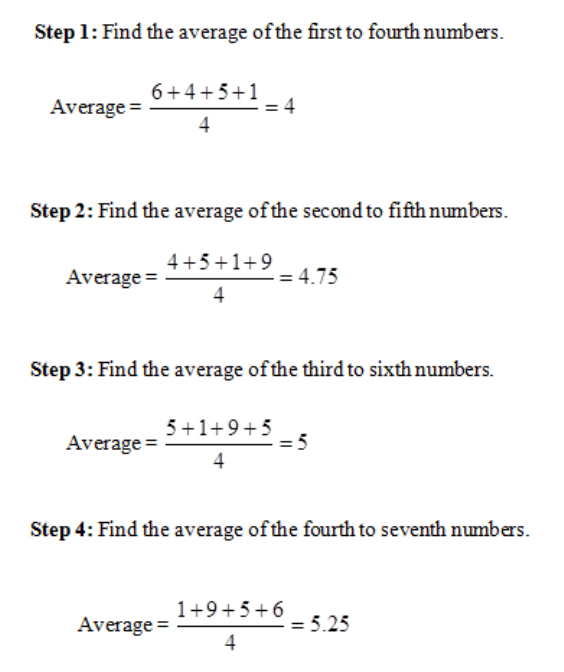

12: TIME SERIES ANALYSIS I

365

Introduction

367

Structure of a Time Series

369

Calculation of Component Factors for the Additive Model

375

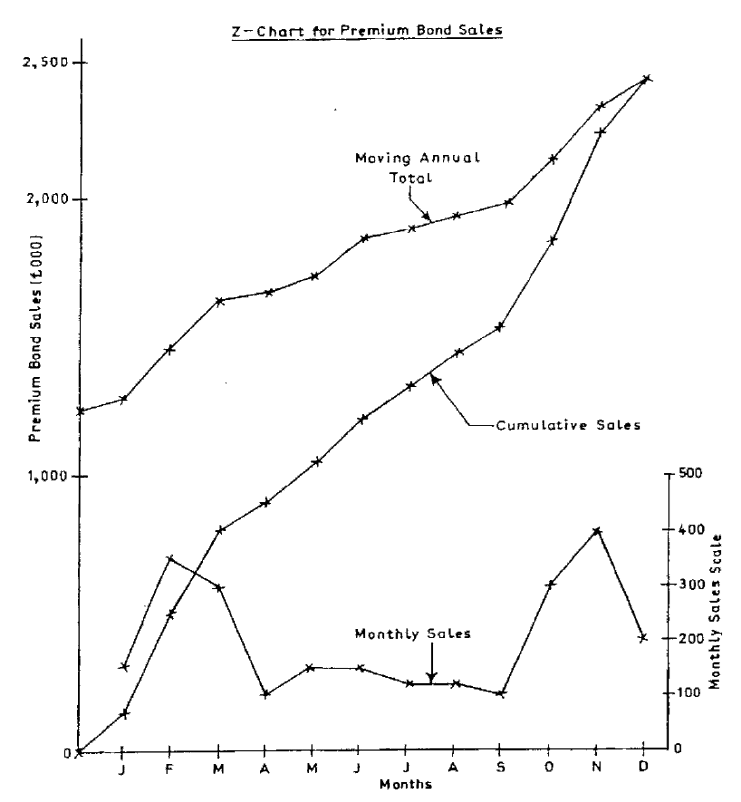

13: TIME SERIES ANALYSIS II

387

Forecasting

389

The Z Chart

393

Summary

395

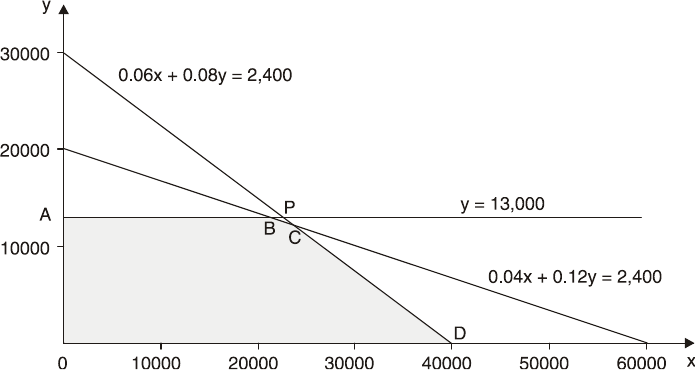

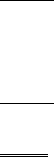

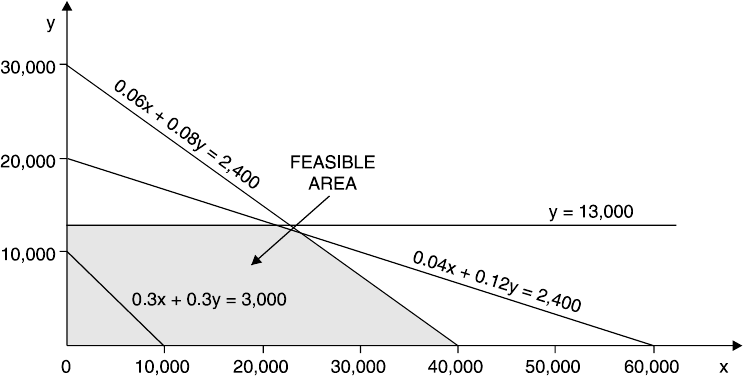

14: LINEAR PROGRAMMING

397

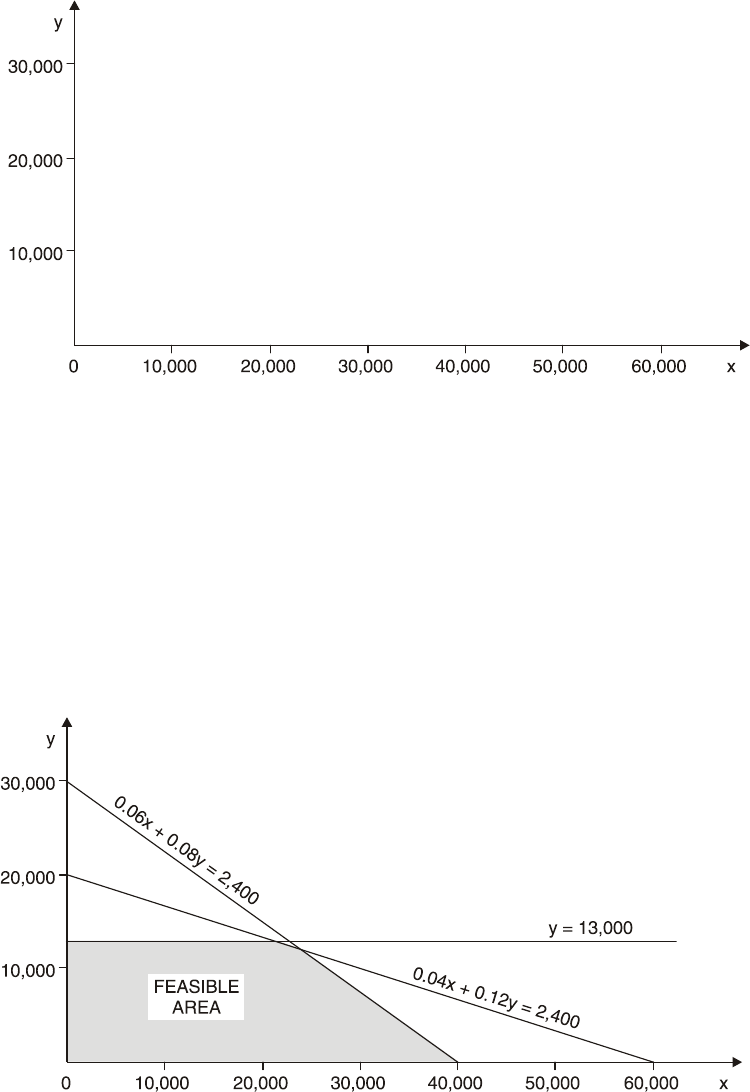

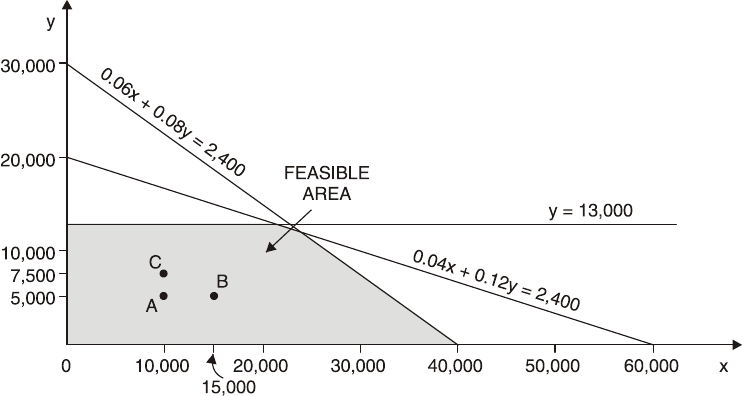

The Graphical Method

399

The Graphical Method Using Simultaneous Equations

417

Sensitivity Analysis (graphical)

423

The Principles of the Simplex Method

433

Sensitivity Analysis (simplex)

447

Using Computer Packages

455

Using Linear Programming

459

15: RISK AND UNCERTAINTY

463

Risk & Uncertainty

465

Allowing for Uncertainty

467

Probabilities and Expected Value

471

Decision Rules

475

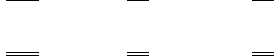

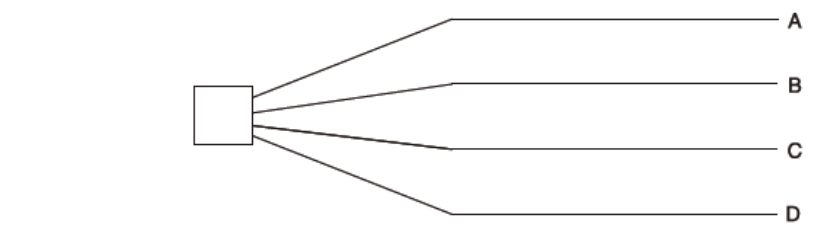

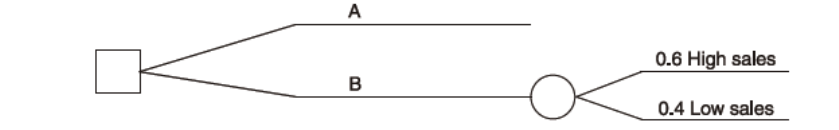

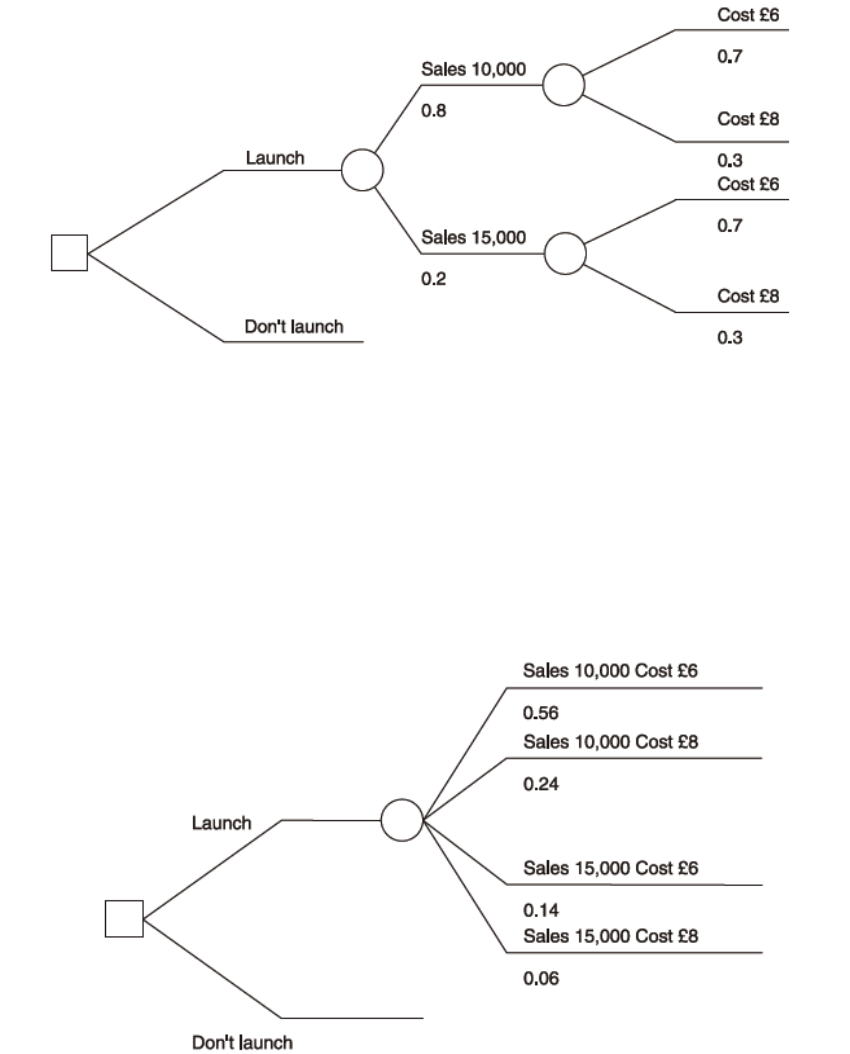

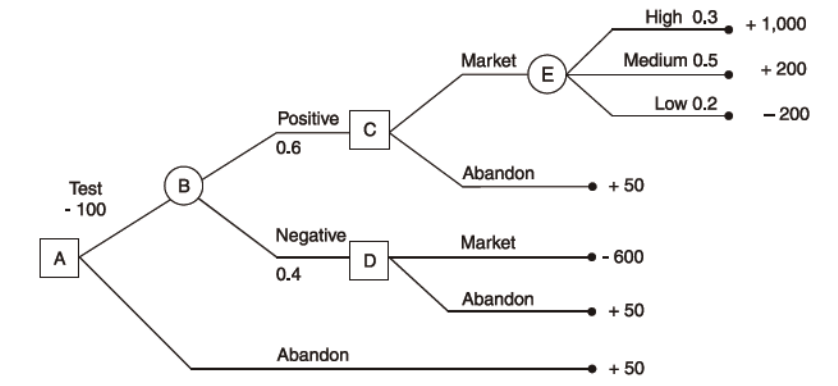

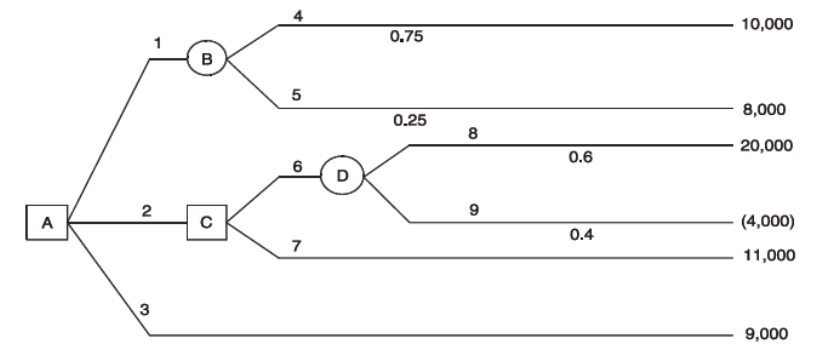

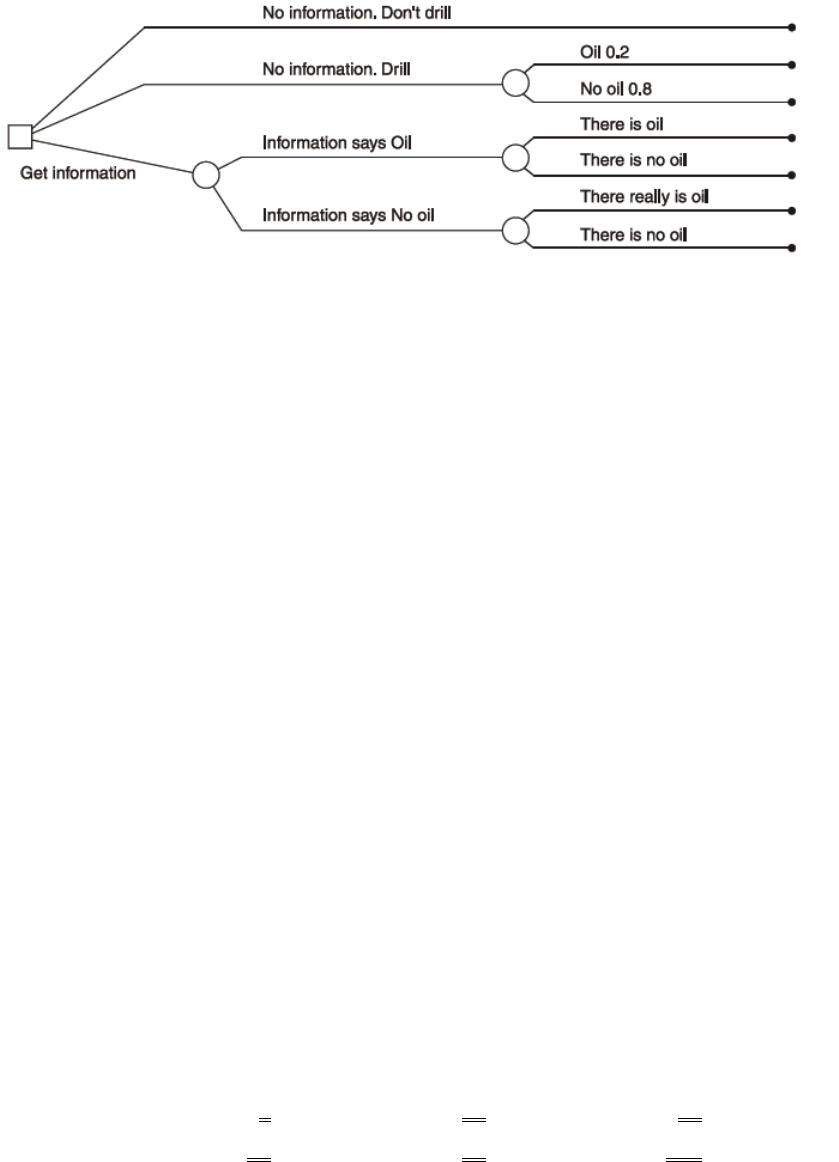

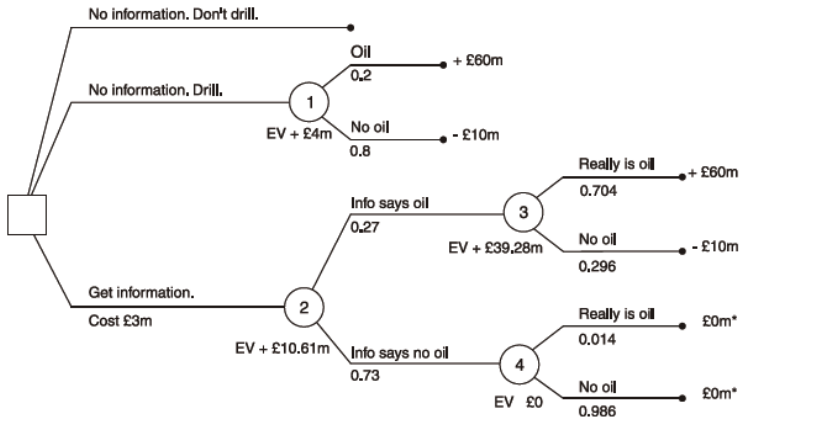

Decision Trees

481

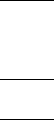

The value of information

491

Sensitivity Analysis

503

Simulation Models

505

16: SPREADSHEETS

509

Origins of Spreadsheets

511

Modern Spreadsheets

513

Concepts

515

How Spreadsheets work

521

Users of Spreadsheets

523

Advantages & Disadvantages of Spreadsheets

525

Spreadsheets in Today’s Climate

527

Page 6

BLANK

Page 7

Stage: Level 1

Subject Title: L1.4 Business Mathematics

Aim

The aim of this subject is to provide students with the tools and techniques to understand the

mathematics associated with managing business operations. Probability and risk play an

important role in developing business strategy. Preparing forecasts and establishing the

relationships between variables are an integral part of budgeting and planning. Financial

mathematics provides an introduction to interest rates and annuities and to investment

appraisals for projects. Preparing graphs and tables in summarised formats and using

spreadsheets are important in both the calculation of data and the presentation of information

to users.

Learning Objectives:

On successful completion of this subject students should be able to show:

• Demonstrate the use of basic mathematics and solve equations and inequalities

• Calculate probability and demonstrate the use of probability where risk and

uncertainty exists

• Apply techniques for summarising and analysing data

• Calculate correlation coefficient for bivariate data and apply techniques for simple

regression

• Demonstrate forecasting techniques and prepare forecasts

• Calculate present and future values of cash flows and apply financial mathematical

techniques

• Apply spreadsheets to calculate and present data

Page 8

Syllabus:

1. Basic Mathematics

• Use of formulae, including negative powers as in the formulae for the learning

curve

• Order of operations in formulae, including brackets, powers and roots

• Percentages and ratios

• Rounding of numbers

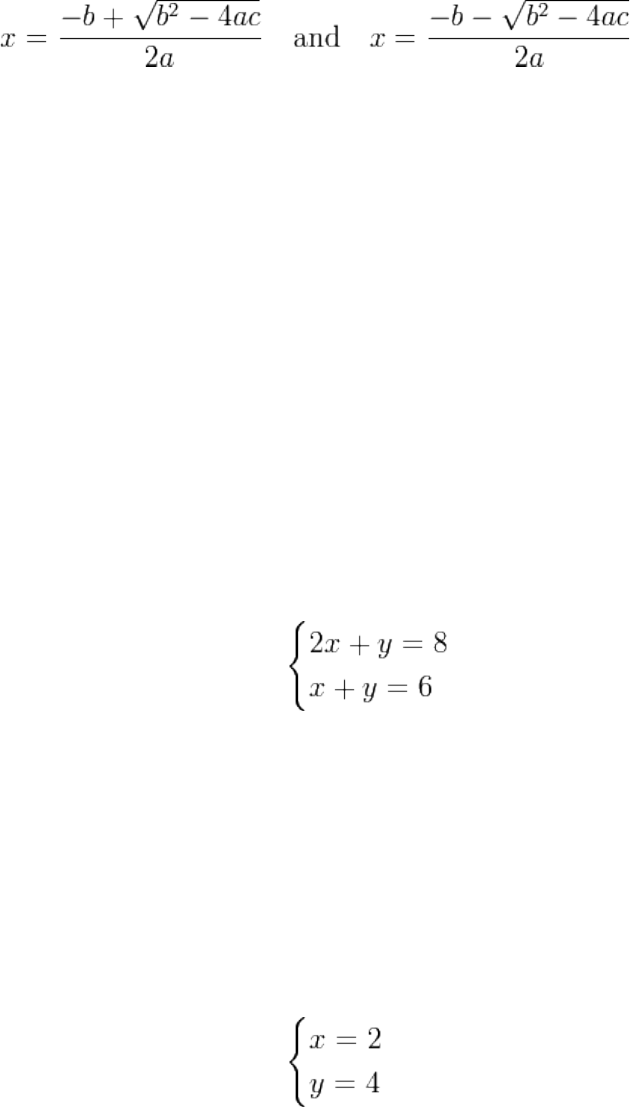

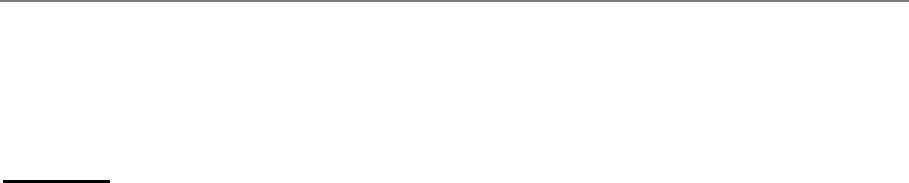

• Basic algebraic techniques and solution of equations, including simultaneous

equations and quadratic equations

• Graphs of linear and quadratic equations

• Manipulation of inequalities

2. Probability

• Probability and its relationship with proportion and per cent

• Addition and multiplication rules of probability theory

• Venn diagrams

• Expected values and expected value tables

• Risk and uncertainty

3. Summarising and Analysing Data

• Data and information

• Tabulation of data

• Graphs, charts and diagrams: scatter diagrams, histograms, bar charts and ogives.

• Summary measures of central tendency and dispersion for both grouped and

ungrouped data

• Frequency distributions

• Normal distribution

• Pareto distribution and the “80:20” rule

• Index numbers

Page 9

4. Relationships between variables

• Scatter diagrams

• Correlation co-efficient: Spearman’s rank correlation coefficient and Pearson’s

correlation coefficient

• Simple linear regression

5. Forecasting

• Time series analysis – graphical analysis

• Trends in time series – graphs, moving averages and linear regressions

• Seasonal variations using both additive and multiplicative models

• Forecasting and its limitations

6. Financial Mathematics

• Simple and compound interest

• Present value(including using formulae and tables)

• Annuities and perpetuities

• Loans and Mortgages

• Sinking funds and savings funds

• Discounting to find net present value (NPV) and internal rate of return (IRR)

• Interpretation of NPV and IRR

Page 10

7. Spreadsheets

• Features and functions of commonly used spreadsheet software, workbook,

worksheet, rows, columns, cells, data, text, formulae, formatting, printing, graphs and

macros.

• Advantages and disadvantages of spreadsheet software, when compared to manual

analysis and other types of software application packages

• Use of spreadsheet software in the day to day work: budgeting, forecasting, reporting

performance, variance analysis, what-if analysis, discounted cash flow calculations

Page 11

STUDY UNIT 1

Probability

Contents

Unit

Title

Page

A.

Estimating Probabilities

13

Introduction

13

Theoretical Probabilities

14

Empirical Probabilities

15

B.

Types of Event

17

C.

The Two Laws of Probability

19

Addition Law for Mutually Exclusive Events

19

Addition Law for a Complete List of Mutually Exclusive Events

20

Addition Law for Non-Mutually-Exclusive Events

Multiplication Law for Independent Events

Distinguishing the Laws

D.

Tree Diagrams

29

Examples

29

E.

Binomial Distribution

39

F.

Poisson Distribution

41

G.

Venn Diagrams

43

Page 12

BLANK

Page 13

A. ESTIMATING PROBABILITIES

Introduction

Suppose someone tells you “there is a 50-50 chance that we will be able to deliver your order

on Friday”. This statement means something intuitively, even though when Friday arrives

there are only two outcomes. Either the order will be delivered or it will not. Statements like

this are trying to put probabilities or chances on uncertain events.

Probability is measured on a scale between 0 and 1. Any event which is impossible has a

probability of 0, and any event which is certain to occur has a probability of 1. For example,

the probability that the sun will not rise tomorrow is 0; the probability that a light bulb will

fail sooner or later is 1. For uncertain events, the probability of occurrence is somewhere

between 0 and 1. The 50-50 chance mentioned above is equivalent to a probability of 0.5.

Try to estimate probabilities for the following events. Remember that events which are more

likely to occur than not have probabilities which are greater than 0.5, and the more certain

they are the closer the probabilities are to 1. Similarly, events which are more likely not to

occur have probabilities which are less than 0.5. The probabilities get closer to 0 as the events

get more unlikely.

(a) The probability that a coin will fall heads when tossed.

(b) The probability that it will snow next Christmas.

(c) The probability that sales for your company will reach record levels next year.

(d) The probability that your car will not break down on your next journey.

(e) The probability that the throw of a dice will show a six.

Page 14

The probabilities are as follows:

(a) The probability of heads is 0.5.

(b) This probability is quite low. It is somewhere between 0 and 0.1.

(c) You can answer this one yourself.

(d) This depends on how frequently your car is serviced. For a reliable car it should be

greater than 0.99.

(e) The probability of a six is 1/6 or 0.167.

Theoretical Probabilities

Sometimes probabilities can be specified by considering the physical aspects of the situation.

For example, consider the tossing of a coin. What is the probability that it will fall heads?

There are two sides to a coin. There is no reason to favour either side as a coin is

symmetrical. Therefore the probability of heads, which we call P(H) is:

P(H) = 0.5.

Another example is throwing a dice. A dice has six sides. Again, assuming it is not weighted

in favour of any of the sides, there is no reason to favour one side rather than another.

Therefore the probability of a six showing uppermost, P(6), is:

P(6) = 1/6 = 0.167.

Page 15

As a third and final example, imagine a box containing 100 beads of which 23 are black and

77 white. If we pick one bead out of the box at random (blindfold and with the box well

shaken up) what is the probability that we will draw a black bead? We have 23 chances out of

100, so the probability is:

(or P = 0.23)

Probabilities of this kind, where we can assess them from our prior knowledge of the

situation, are also called “a priori” probabilities.

In general terms, we can say that if an event E can happen in h ways out of a total of n

possible equally likely ways, then the probability of that event occurring (called a success) is

given by:

P(E) =

=

Empirical Probabilities

Often it is not possible to give a theoretical probability of an event. For example, what is the

probability that an item on a production line will fail a quality control test? This question can

be answered either by measuring the probability in a test situation (i.e. empirically) or by

relying on previous results. If 100 items are taken from the production line and tested, then:

Probability of failure P(F) =

So, if 5 items actually fail the

test

23

–––

100

h

–––

n

Number of possible ways of E occurring

----------------------------------------------------

Total number of possible outcomes

Number of items which fail

----------------------------------------------------

Total number of items tested

Page 16

P(F) = = 0.05.

Sometimes it is not possible to set up an experiment to calculate an empirical probability. For

example, what are your chances of passing a particular examination? You cannot sit a series

of examinations to answer this. Previous results must be used. If you have taken 12

examinations in the past, and failed only one, you might estimate:

5

------

100

Page 17

B. TYPES OF EVENT

There are five types of event:

• Mutually exclusive

• Non-mutually-exclusive

• Independent

• Dependent or non-independent

• Complementary.

(a) Mutually Exclusive Events

If two events are mutually exclusive then the occurrence of one event precludes the

possibility of the other occurring. For example, the two sides of a coin are mutually exclusive

since, on the throw of the coin, “heads” automatically rules out the possibility of “tails”. On

the throw of a dice, a six excludes all other possibilities. In fact, all the sides of a dice are

mutually exclusive; the occurrence of any one of them as the top face automatically excludes

any of the others.

(b) Non-Mutually-Exclusive Events

These are events which can occur

. For example, in a pack of playing cards hearts and queens are non-mutually-exclusive since

there is one card, the queen of hearts, which is both a heart and a queen and so satisfies both

criteria for success.

(c) Independent Events

These are events which are not mutually exclusive and where the occurrence of one event

does not affect the occurrence of the other. For example, the tossing of a coin in no way

affects the result of the next toss of the coin; each toss has an independent outcome.

Page 18

(d) Dependent or Non-Independent Events

These are situations where the outcome of one event is dependent on another event. The

probability of a car owner being able to drive to work in his car is dependent on him being

able to start the car. The probability of him being able to drive to work given that the car

starts is a conditional probability and

P(Drive to work|Car starts)

where the vertical line is a shorthand way of writing “given that”.

(e) Complementary Events

An event either occurs or it does not occur, i.e. we are certain that one or other of these

situations holds.

For example, if we throw a dice and denote the event where a six is uppermost by A, and the

event where either a one, two, three, four or five is uppermost by Ā (or not A) then A and Ā

are complementary, i.e. they are mutually exclusive with a total probability of 1. Thus:

P(A) + P(Ā) = 1.

This relationship between complementary events is useful as it is often easier to find the

probability of an event not occurring than to find the probability that it does occur. Using the

above formula, we can always find P(A) by subtracting P(Ā) from 1.

Page 19

C. THE TWO LAWS OF PROBABILITY

Addition Law for Mutually Exclusive Events

Consider again the example of throwing a dice. You will remember that

What is the chance of getting 1, 2 or 3?

From the symmetry of the dice you can see that P(1 or 2 or 3) = 0.5. But also, from the

equations shown above you can see that

P(1) + P(2) + P(3) = 1/6 + 1/6 + 1/6 = 0.5.

This illustrates that

Page 20

P(1 or 2 or 3) = P(1) + P(2) + P(3)

This result is a general one and it is called the addition law of probabilities for mutually

exclusive events. It is used to calculate the probability of one of any group of mutually

exclusive events. It is stated more generally as:

P(A or B or ... or N) = P(A) + P(B) + ... + P(N)

where A, B ... N are mutually exclusive events.

Addition Law for a Complete List of Mutually Exclusive

Events

(a) If all possible mutually exclusive events are listed, then it is certain that one of these

outcomes will occur. For example, when the dice is tossed there must be one number

showing afterwards.

P(1 or 2 or 3 or 4 or 5 or 6) = 1.

Using the addition law for mutually exclusive events, this can also be stated as

P(1) + P(2) + P(3) + P(4) + P(5) + P(6) = 1.

Again this is a general rule. The sum of the probabilities of a complete list of mutually

exclusive events will always be 1.

Page 21

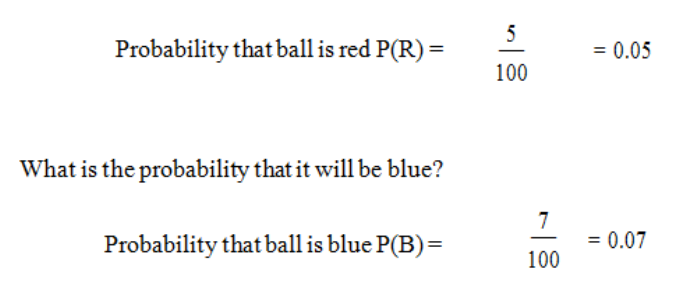

Example

An urn contains 100 coloured balls. Five of these are red, seven are blue and the rest are

white. One ball is to be drawn at random from the urn.

What is the probability that it will be red?

What is the probability that it will be red or blue?

P(R or B) = P(R) + P(B) = 0.05 + 0.07 = 0.12.

This result uses the addition law for mutually exclusive events since a ball cannot be both

blue and red.

What is the probability that it will be white?

The ball must be either red or blue or white. This is a complete list of mutually exclusive

possibilities.

Therefore P(R) + P(B) + P(W) = 1

P(W) = 1 – P(R) – P(B)

= 1 – 0.05- 0.07

= 0.88

Page 22

Addition Law for Non-Mutually-Exclusive Events

Events which are non-mutually-exclusive are, by definition, capable of occurring together.

The addition law can still be used but the probability of the events occurring together must be

deducted:

P(A or B or both) = P(A) + P(B) – P(A and B).

Examples

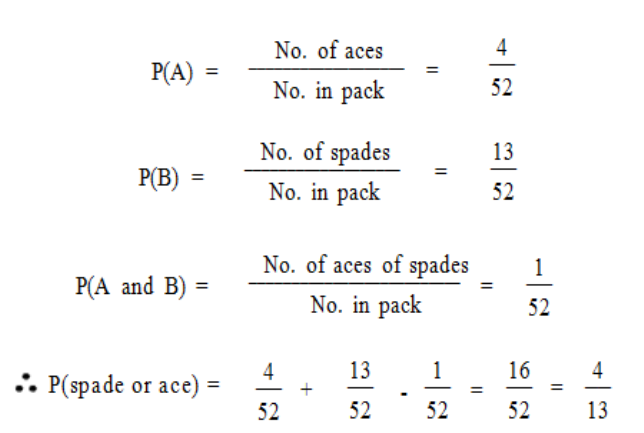

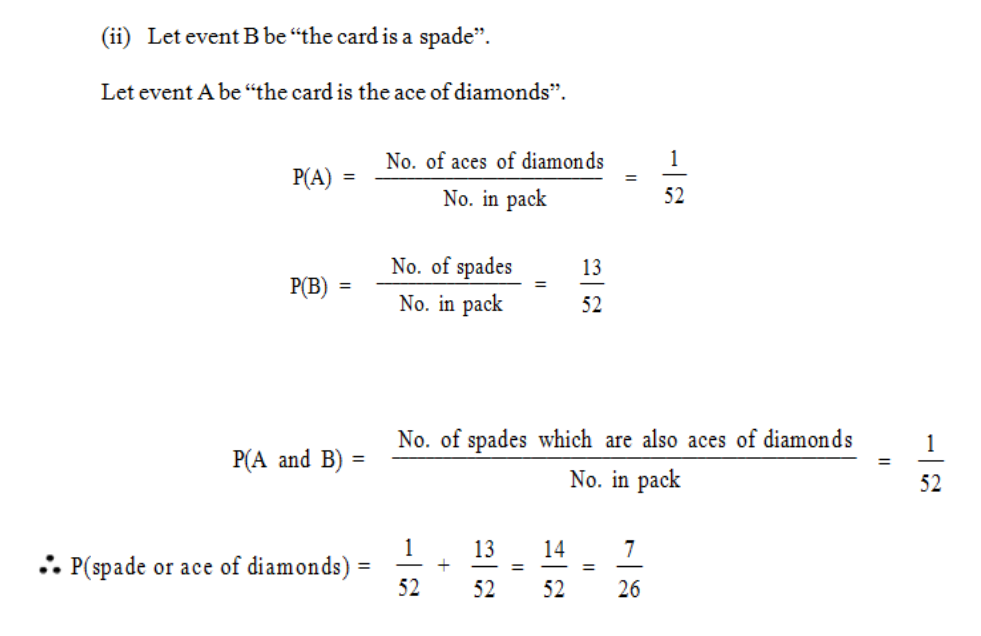

(a) If one card is drawn from a pack of 52 playing cards, what is the probability: (i) that it

is either a spade or an ace; (ii) that it is either a spade or the ace of diamonds?

(i) Let event B be “the card is a spade”. Let event A be “the card is an ace”.

We require P(spade or ace [or both]) = P(A or B)

= P(A) + P(B) – P(A and B)

Page 23

(b) At a local shop 50% of customers buy unwrapped bread and 60% buy wrapped bread.

What proportion of customers buy at least one kind of bread if 20% buy both wrapped

and unwrapped bread?

Let S represent all the customers.

Let T represent those customers buying unwrapped bread.

Let W represent those customers buying wrapped bread.

P(buy at least one kind of bread) = P(buy wrapped or unwrapped or both)

= P(T or W)

= P(T) + P(W) – P(T and W)

= 0.5 + 0.6 – 0.2

= 0.9

Page 24

So, 9/10 of the customers buy at least one kind of bread.

Multiplication Law for Independent Events

Consider an item on a production line. This item could be defective or acceptable. These two

possibilities are mutually exclusive and represent a complete list of alternatives. Assume that:

Probability that it is defective, P(D) = 0.2

Probability that it is acceptable, P(A) = 0.8.

Now consider another facet of these items. There is a system for checking them, but only

every tenth item is checked. This is shown as:

Probability that it is checked P(C) = 0.1

Probability that it is not checked P(N) = 0.9.

Again these two possibilities are mutually exclusive and they represent a complete list of

alternatives. An item is either checked or it is not.

Consider the possibility that an individual item is both defective and not checked. These two

events can obviously both occur together so they are not mutually exclusive. They are,

however, independent. That is to say, whether an item is defective or acceptable does not

affect the probability of it being tested.

There are also other kinds of independent events. If you toss a coin once and then again a

second time, the outcome of the second test is independent of the results of the first one. The

Page 25

results of any third or subsequent test are also independent of any previous results. The

probability of heads on any test is 0.5 even if all the previous tests have resulted in heads.

To work out the probability of two independent events both happening, you use the

multiplication law. This can be stated as:

P(A and B) = P(A) x P(B) if A and B are independent events.

Again this result is true for any number of independent events.

So P(A and B and ... and N) = P(A) x P(B) x ... x P(N).

Consider the example above. For any item:

Probability that it is defective, P(D) = 0.2

Probability that it is acceptable, P(A) = 0.8

Probability that it is checked, P(C) = 0.1

Probability that it is not checked, P(N) = 0.9.

Using the multiplication law to calculate the probability that an item is both defective and not

checked

P(D and N) = 0.2 x 0.9 = 0.18.

The probabilities of the other combinations of independent events can also be calculated.

P(D and C) = 0.2 x 0.1 = 0.02

P(A and N) = 0.8 x 0.9 = 0.72

P(A and C) = 0.8 x 0.1 = 0.08.

Page 26

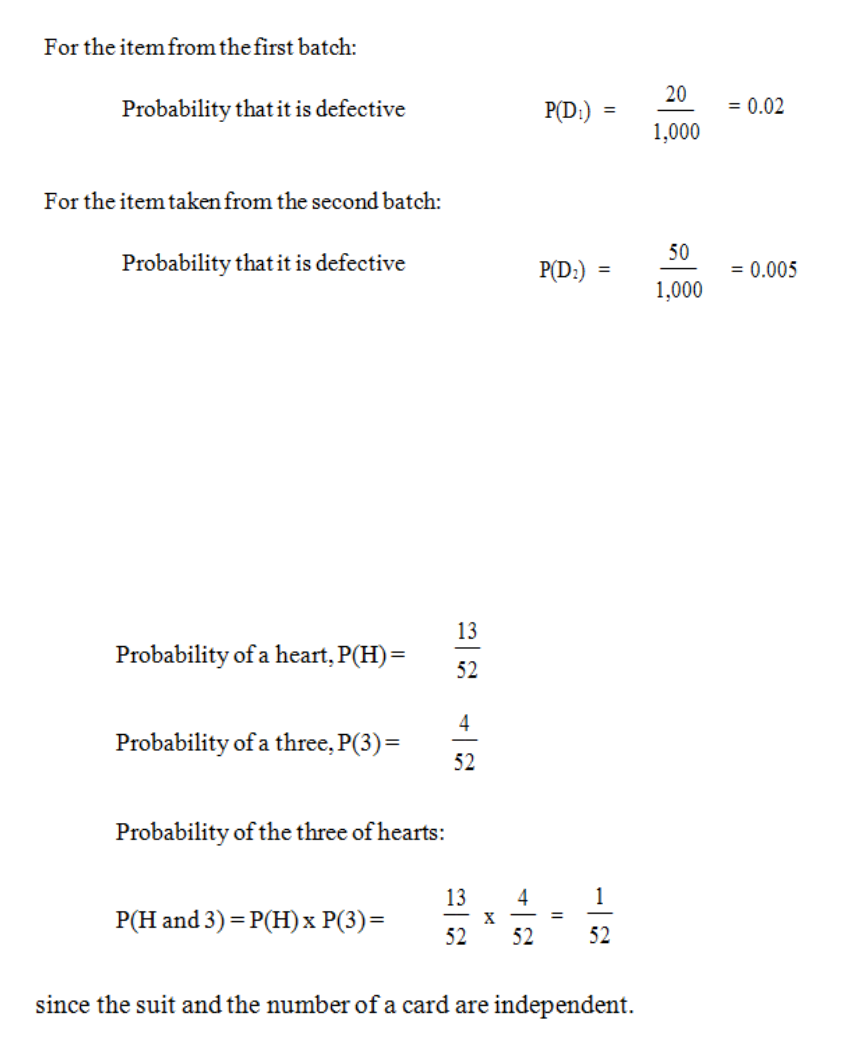

Examples

a) A machine produces two batches of items. The first batch contains 1,000 items of

which 20 are damaged. The second batch contains 10,000 items of which 50 are

damaged. If one item is taken from each batch, what it the probability that both items

are defective?

Since these two probabilities are independent

P(D1 and D2) = P(D1) x P(D2) = 0.02 x 0.005 = 0.0001.

b) A card is drawn at random from a well shuffled pack of playing cards. What is the

probability that the card is a heart? What is the probability that the card is a three?

What is the probability that the card is the three of hearts?

Page 27

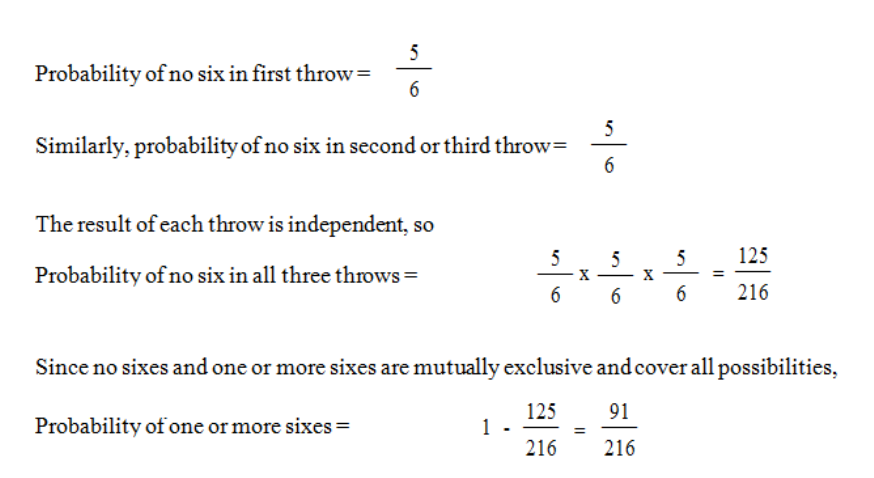

c) A dice is thrown three times. What is the probability of one or more sixes in these

three throws?

Distinguishing the Laws

Although the above laws of probability are not complicated, you must think carefully and

clearly when using them. Remember that events must be mutually exclusive before you can

use the addition law, and they must be independent before you can use the multiplication law.

Another matter about which you must be careful is the listing of equally likely outcomes. Be

sure that you list all of them. For example, we can list the possible results of tossing two

coins, namely:

First Coin Second Coin

Heads Heads

Tails Heads

Heads Tails

Tails Tails

There are four equally likely outcomes. Do not make the mistake of saying, for example, that

there are only two outcomes (both heads or not both heads); you must list all the possible

outcomes. (In this case “not both heads” can result in three different ways, so the probability

of this result will be higher than “both heads”.)

Page 28

In this example, the probability that there will be one heads and one tails (heads - tails, or

tails - heads) is 0.5. This is a case of the addition law at work, the probability of heads - tails (

1/4 ) plus the probability of tails - heads ( 1/4 ). Putting it another way, the probability of

different faces is equal to the probability of the same faces - in both cases1/2.

Page 29

D. TREE DIAGRAMS

A compound experiment, i.e. one with more than one component part, may be regarded as a

sequence of similar experiments. For example, the rolling of two dice can be considered as

the rolling of one followed by the rolling of the other; and the tossing of four coins can be

thought of as tossing one after the other. A tree diagram enables us to construct an exhaustive

list of mutually exclusive outcomes of a compound experiment.

Furthermore, a tree diagram gives us a pictorial representation of probability.

By exhaustive, we mean that every possible outcome is considered.

By mutually exclusive we mean, as before, that if one of the outcomes of the compound

experiment occurs then the others cannot.

Examples

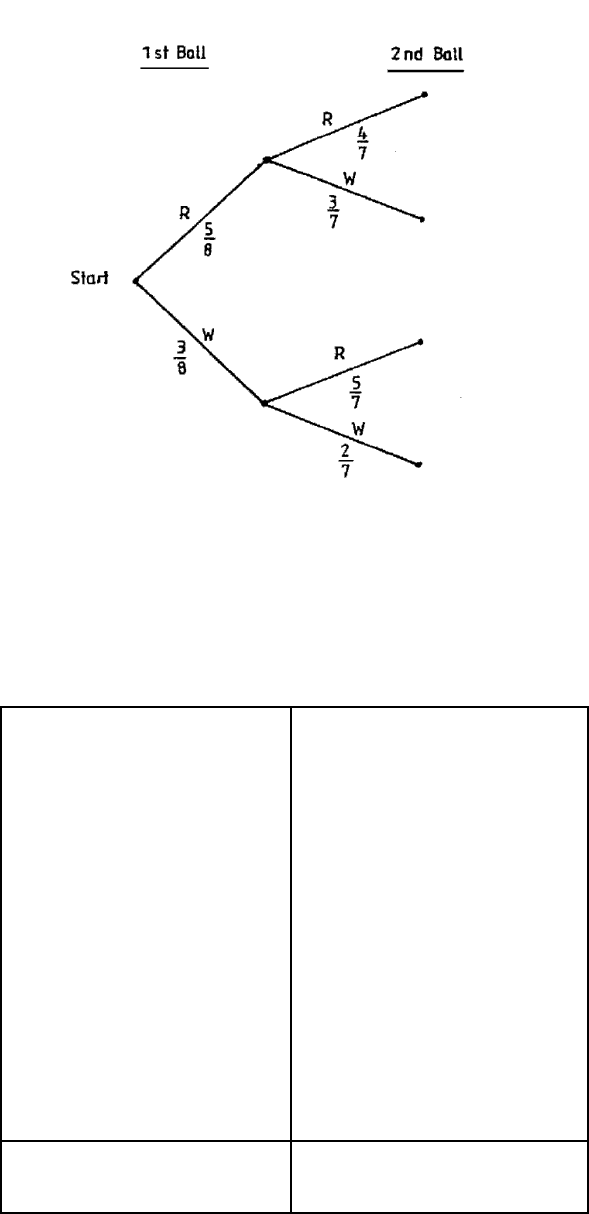

a) The concept can be illustrated using the example of a bag containing five red and

three white billiard balls. If two are selected at random without replacement, what is

the probability that one of each colour is drawn?

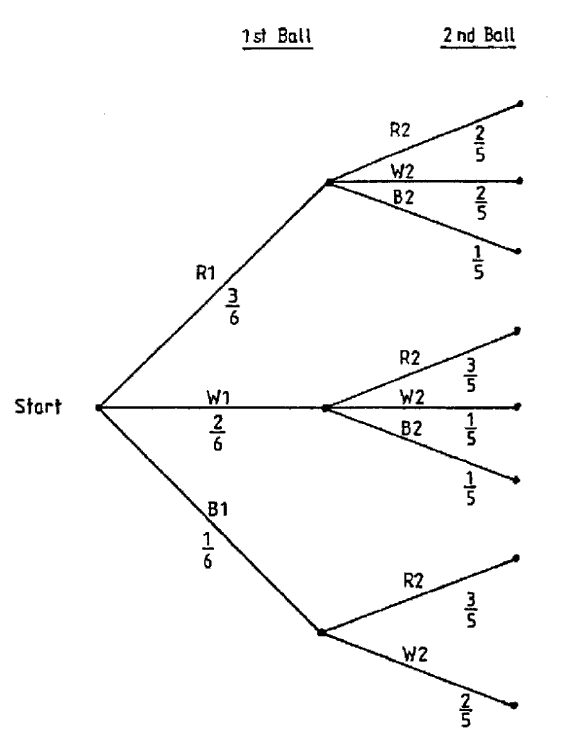

We can represent this as a tree diagram as in Figure 1.

N.B. R indicates red ball

W indicates white ball.

Probabilities at each stage are shown alongside the branches of the tree.

Figure 1.1

Page 30

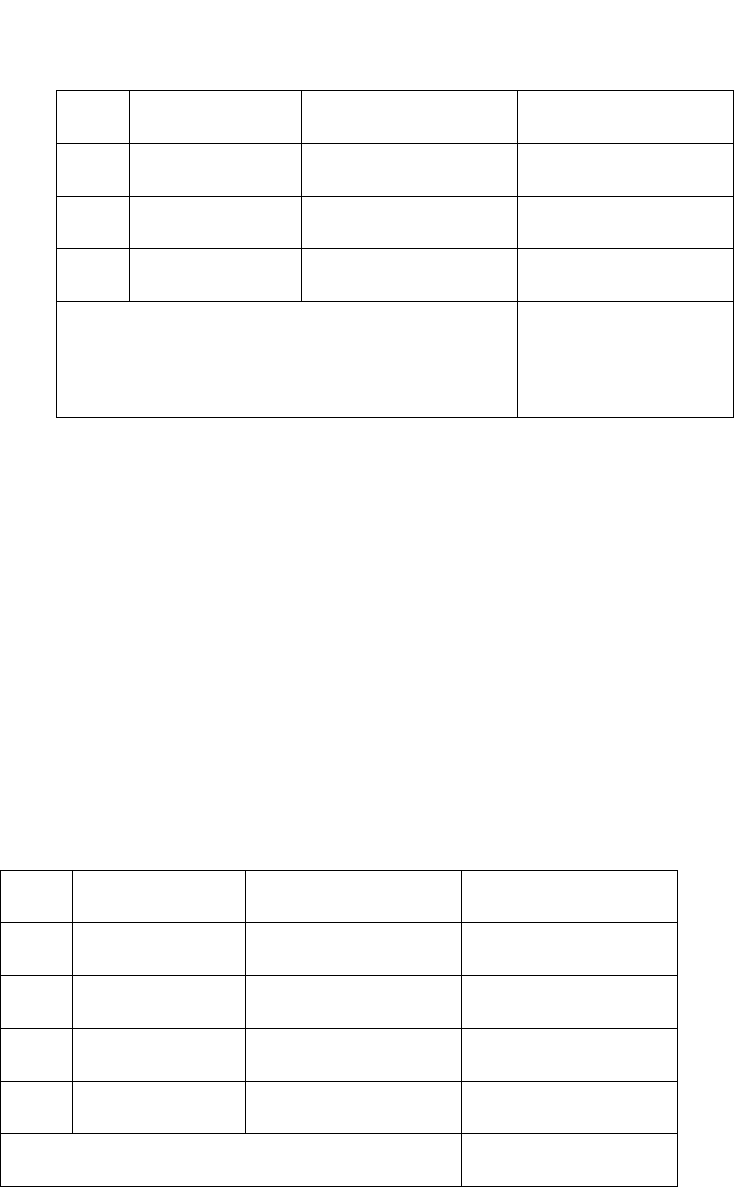

Table 1.1

Outcome

Probability

5

4

20

------ x ----- = –––

8

7

56

5

3

15

----- x ----- = –––

8

7

56

3

5

15

----- x ----- = –––

8

7

56

3

2

6

------ x ----- = –––

8

7

56

Total

1

RR

RW

WR

WW

Page 31

We work from left to right in the tree diagram. At the start we take a ball from the bag. This

ball is either red or white so we draw two branches labelled R and W, corresponding to the

two possibilities. We then also write on the branch the probability of the outcome of this

simple experiment being along that branch.

We then consider drawing a second ball from the bag. Whether we draw a red or a white ball

the first time, we can still draw a red or a white ball the second time, so we mark in the two

possibilities at the end of each of the two branches of our existing tree diagram. We can then

see that there are four different mutually exclusive outcomes possible, namely RR, RW, WR

and WW. We enter on these second branches the conditional probabilities associated with

them.

Thus, on the uppermost branch in the diagram we must insert the probability of

obtaining a second red ball given that the first was red. This probability is 4/7 as there are

only seven balls left in the bag, of which four are red. Similarly for the other branches.

Each complete branch from start to tip represents one possible outcome of the compound

experiment and each of the branches is mutually exclusive. To obtain the probability of a

particular outcome of the compound experiment occurring, we multiply the probabilities

along the different sections of the branch, using the general multiplication law for

probabilities.

We thus obtain the probabilities shown in Table 1.1. The sum of the probabilities should add

up to 1, as we know one or other of these mutually exclusive outcomes is certain to happen.

Page 32

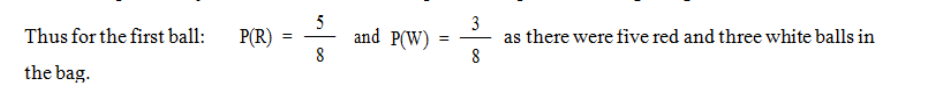

b) A bag contains three red balls, two white balls and one blue ball. Two balls are drawn

at random (without replacement). Find the probability that:

i. Both white balls are drawn.

ii. The blue ball is not drawn.

iii. A red then a white are drawn.

iv. A red and a white are drawn.

To solve this problem, let us build up a tree diagram.

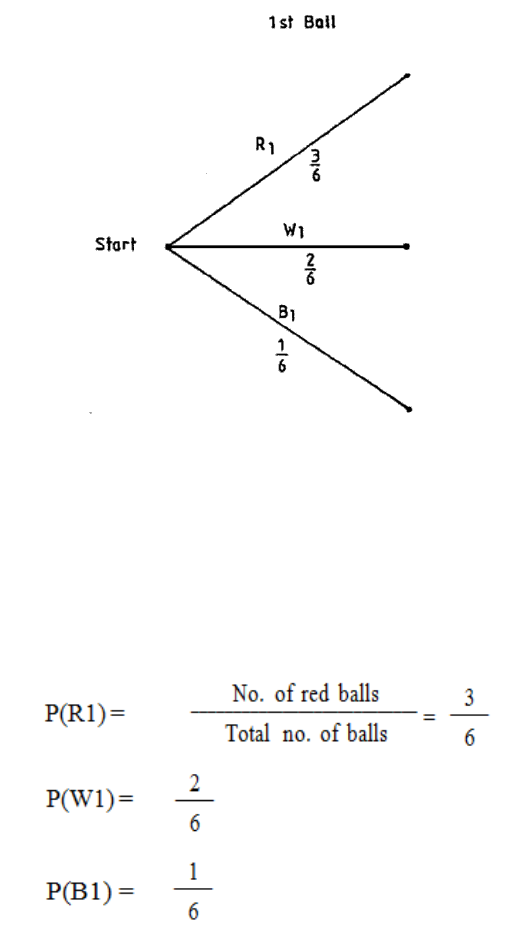

Figure 1.2

The first ball drawn has a subscript of 1, e.g. red first = R1. The second ball drawn has a

subscript of 2.

Page 33

Note there is only one blue ball in the bag, so if we picked a blue ball first then we can have

only a red or a white second ball. Also, whatever colour is chosen first, there are only five

balls left as we do not have replacement

Figure 1.3

Page 34

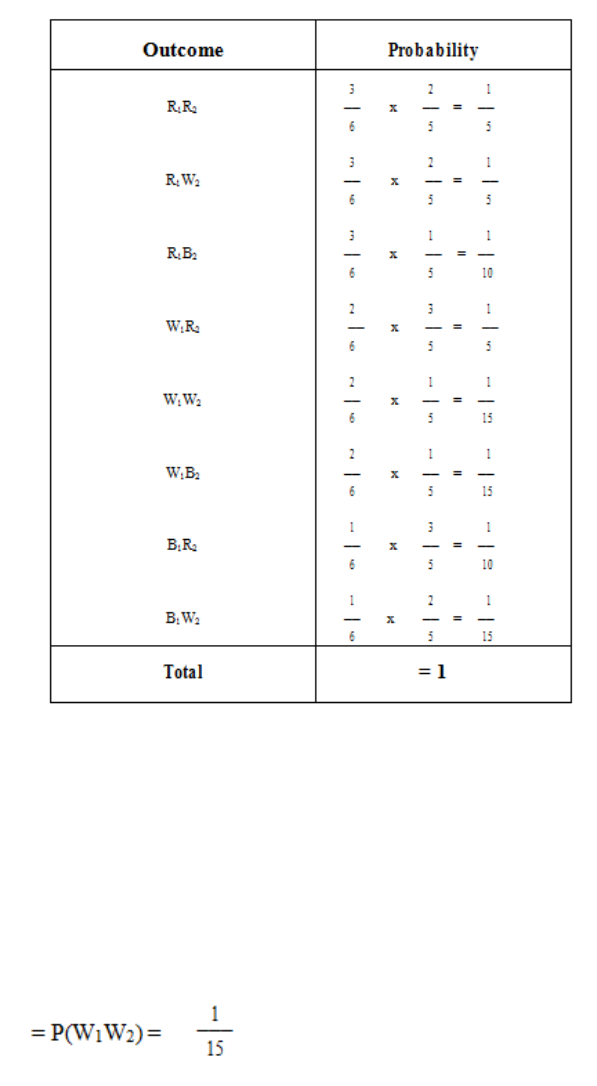

We can now list all the possible outcomes, with their associated probabilities:

Table 1.2

It is possible to read off the probabilities we require from Table 1.2.

(i) Probability that both white balls are drawn:

Page 35

(ii) Probability the blue ball is not drawn:

Probability that a red then a white are drawn:

Probability that a red and a white are drawn:

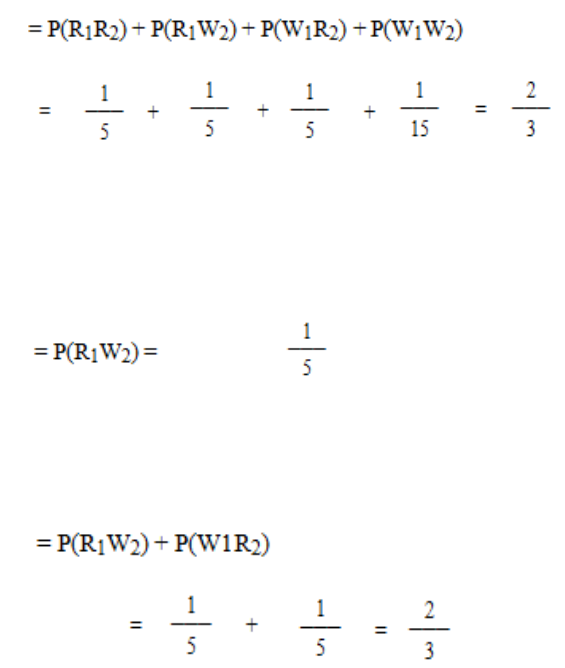

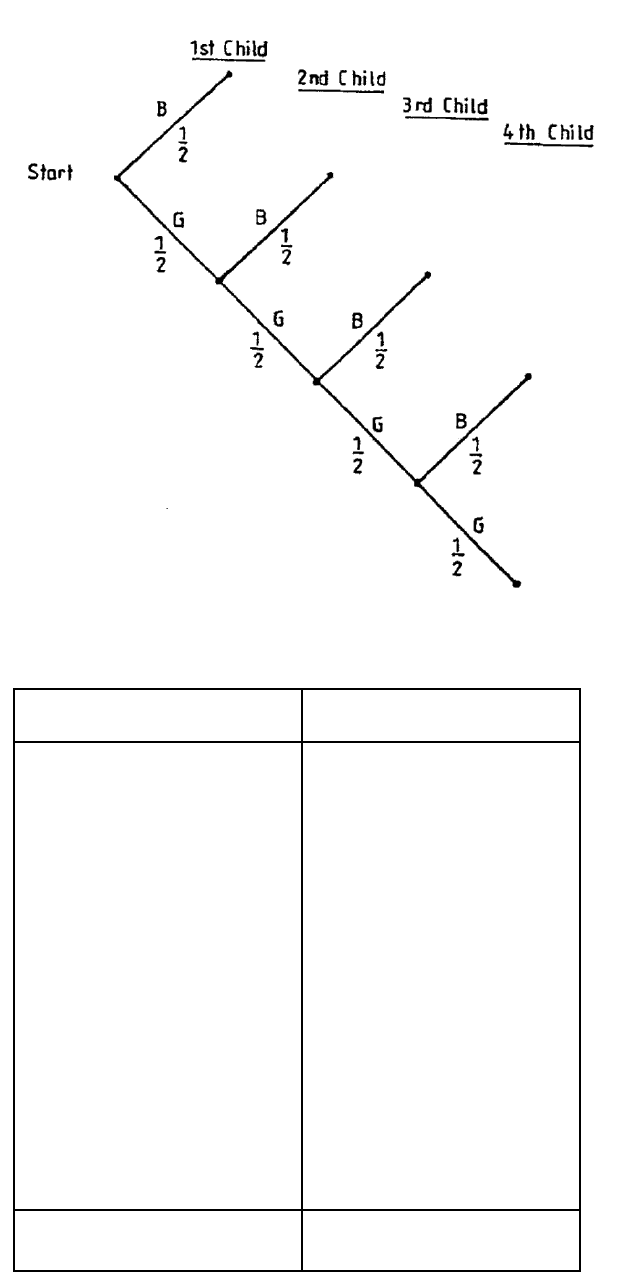

c) A couple go on having children, to a maximum of four, until they have a son. Draw a

tree diagram to find the possible families’ size and calculate the probability that they

have a son.

We assume that any one child is equally likely to be a boy or a girl, i.e. P(B) = P(G) = 1/2 .

Note that once they have produced a son, they do not have any more children. The tree

diagram will be as in Figure 1.4.

Page 36

Figure 1.4

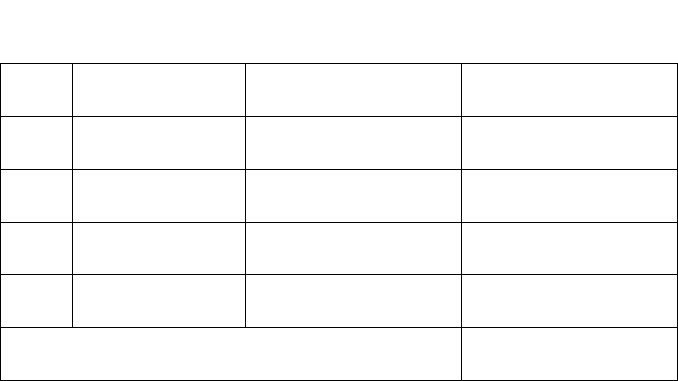

Table 1.3

Possible Families

Probability

1

1 Boy

––

2

1

1

1 Girl, 1 Boy

( –– ) 2 = ––

2

4

1

1

2 Girls, 1 Boy

( –– ) 3 = ––

2

8

1

1

3 Girls, 1 Boy

( –– ) 4 = ––

2

16

1

1

4 Girls

( –– ) 4 = ––

2

16

Total

= 1

Page 37

Probability they have a son is therefore:

Page 38

BLANK

Page 39

E. BINOMIAL DISTRIBUTION

The binomial distribution can be used to describe the likely outcome of events for discrete

variables which:

(a) Have only two possible outcomes; and

(b) Are independent.

Suppose we are conducting a questionnaire. The Binomial distribution might be used to

analyse the results if the only two responses to a question are ‘yes’ or ‘no’ and if the response

to one question (eg, ‘yes’) does not influence the likely response to any other question (ie

‘yes’ and ‘no’).

Put rather more formally, the Binomial distribution occurs when there are n independent trials

(or tests) with the probability of ‘success’ or ‘failure’ in each trial (or test) being constant.

Let p = the probability of ‘success’

Let q = the probability of ‘failure’

Then q = 1 – p

For example, if we toss an unbiased coin ten times, we might wish to find the probability of

getting four heads! Here n = 10, p (head) = 0.5, q (tail) = 0.5 and q = 1 – p.

The probability of obtaining r ‘successes’ in ‘n’ trials (tests) is given by the following

formula:

!)!(

!

rrn

n

C

rn

−

=

where C is the number of combinations.

The probability of getting exactly four heads out of ten tosses of an unbiased coin, can

therefore be solved as:

P(4) = 10C40.540.56

now

210

1234

78910

!4)!410(

!10

410 =

×××

×××

=

−

=C

so P(4) = 210 x (0.5)4 x (0.5)6

Page 40

P(4) = 210 x 0.625 x 0.015625

P(4) = 0.2051

In other words the probability of getting exactly four heads out of ten tosses of an unbiased

coin is 0.2051 or 20.51%.

It may be useful to state the formulae for finding all the possible probabilities of obtaining r

successes in n trials.

Where P(r) = nCrprqn-r

And r = 0, 1, 2, 3, ….n

then, from our knowledge of combinations

P(O) = qn

P(1) = npq n-1

P(2) = n (n-1)P2qn-2

2x1

P(3) = n(n-1) (n-2) p3qn-3

3x2x1

P(4) = n(n-1)(n-2)(n-3) p4qn-4

4x3x2x1

P (n-2) = n(n-1) pn-2q2

2x1

P (n-1) = npn-1q

P(n) = pn

Page 41

F. POISSON DISTRIBUTION

Introduction

The poisson distribution may be regarded as a special case of the binomial distribution. As

with the Binomial distribution, the Poisson distribution can be used where there are only two

possible outcomes:-

1. Success (p)

2. Failure (q)

These events are independent. The Poisson distribution is usually used where n is very large

but p is very small, and where the mean np is constant and typically < 5. As p is very small

(p < 0.1 and often much less), then the chance of the event occurring is extremely low. The

Poisson distribution is therefore typically used for unlikely events such as accidents, strikes

etc.

The Poisson distribution is also used to solve problems where events tend to occur at random,

such as incoming phone calls, passenger arrivals at a terminal etc.

Whereas the formula for solving Binomial problems uses the probabilities, for both “success”

(p) and “failure” (q), the formula for solving Poisson problems only uses the probabilities for

“success” (p).

If µ is the mean, it is possible to show that the probability of r successes is given by the

formula:

P(r) = e-µµr

r!

where e = exponential constant = 2.7183

µ = mean number of successes = np

n = number of trails

p = probability of “success”

r = number of successes

If we substitute r = 0, 1, 2, 3, 4, 5…. in this formula we obtain the following expressions:

P (O) = e-µ

P (1) = µe-u

P (2) = µ2e-µ

2 x 1

P (3) = µ3e-µ

Page 42

3x2x1

P (4) = µ4e-µ

4x3x2x1

P (5) = µ5e-µ

5x4x3x2x1

In questions you are either given the mean µ or you have to find µ from the information

given, which is usually data for n and p; µ is then obtained from the relationship µ = np.

You have to be able to work out e raised to a negative power.

e-3 is the same as 1 so you can simply work this out using 1

e3 2.71833

Alternatively, many calculators have a key marked ex. The easiest way to find e-3 on your

calculator is to enter 3, press +/- key, press e key, and you should obtain 0.049787. If your

calculator does not have an e key but has an xy key, enter 2.7813, press xy key, enter 3, press

+/- key, then press = key; you should obtain 0.049786.

Page 43

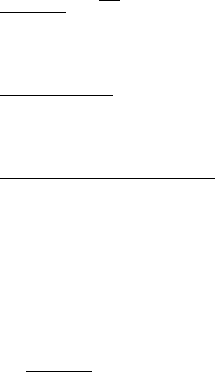

G. VENN DIAGRAMS

Definition:

Venn diagrams or set diagrams are diagrams that show all possible logical relations between

a finite collection of sets.

A Venn diagram is constructed with a collection of simple closed curves drawn in a plane.

The “principle of these diagrams is that classes (or sets) be represented by regions in such

relation to one another that all the possible logical relations of these classes can be indicated

in the same diagram. That is, the diagram initially leaves room for any possible relation of

the classes, and the actual or given relation, can then be specified by indication that some

particular region is nor or is not null”

Venn diagrams normally comprise overlapping circles. The interior of the circle

symbolically represents the elements of the set, while the exterior represents elements that are

not members of the set.

For example: in a two-set Venn diagram, one circle may represent the group of all wooden

objects, while another circle may represent the set of all tables. The overlapping area or

intersection would then represent the set of all wooden tables.

Venn Diagram that shows the intersections of the Greek, Latin and Russian alphabets (upper

case letters)

Page 44

BLANK

Page 45

STUDY UNIT 2

Collection of Data

Contents

Unit

Title

Page

A.

Collection of Data - Preliminary Considerations

47

Exact Definition of the Problem

47

Definition of the Units

47

Scope of the Enquiry

47

Accuracy of the Data

48

B.

Types of Data

49

Primary and Secondary Data

49

Quantitative/Qualitative Categorisation

49

Continuous/Discrete Categorisation

49

C.

Requirements of Statistical Data

51

Homogeneity

51

Completeness

51

Accurate Definition

52

Uniformity

52

D.

Methods of Collecting Data

53

Published Statistics

53

Personal Investigation/Interview

54

Delegated Personal Investigation/Interview

54

Questionnaire

54

E.

Interviewing

57

Advantages of Interviewing

57

Disadvantages of Interviewing

57

F.

Designing the Questionnaire

59

Principles

59

An Example

60

G.

Choice of Method

65

H.

Pareto Distribution and the “80:20” Rule

67

Page 46

BLANK

Page 47

A. COLLECTION OF DATA - PRELIMINARY

CONSIDERATIONS

Even before the collection of data starts, there are some important points to consider when

planning a statistical investigation. Shortly I will give you a list of these together with a few

notes on each; some of them you may think obvious or trivial, but do not neglect to learn

them because they are very often the points which are overlooked. Furthermore, examiners

like to have lists as complete as possible when they ask for them!

What, then, are these preliminary matters?

Exact Definition of the Problem

This is necessary in order to ensure that nothing important is omitted from the enquiry, and

that effort is not wasted by collecting irrelevant data. The problem as originally put to the

statistician is often of a very general type and it needs to be specified precisely before work

can begin.

Definition of the Units

The results must appear in comparable units for any analysis to be valid. If the analysis is

going to involve comparisons, then the data must all be in the same units. It is no use just

asking for “output” from several factories - some may give their answers in numbers of items,

some in weight of items, some in number of inspected batches and so on.

Scope of the Enquiry

No investigation should be got under way without defining the field to be covered. Are we

interested in all departments of our business, or only some? Are we to concern ourselves with

our own business only, or with others of the same kind?

Page 48

Accuracy of the Data

To what degree of accuracy is data to be recorded? For example, are ages of individuals to be

given to the nearest year or to the nearest month or as the number of completed years? If

some of the data is to come from measurements, then the accuracy of the measuring

instrument will determine the accuracy of the results. The degree of precision required in an

estimate might affect the amount of data we need to collect. In general, the more precisely we

wish to estimate a value, the more readings we need to take.

Page 49

B. TYPES OF DATA

Primary and Secondary Data

In its strictest sense, primary data is data which is both original and has been obtained in

order to solve the specific problem in hand. Primary data is therefore raw data and has to be

classified and processed using appropriate statistical methods in order to reach a solution to

the problem.

Secondary data is any data other than primary data. Thus it includes any data which has been

subject to the processes of classification or tabulation or which has resulted from the

application of statistical methods to primary data, and all published statistics.

Quantitative/Qualitative Categorisation

Variables may be either quantitative or qualitative. Quantitative variables, to which we shall

restrict discussion here, are those for which observations are numerical in nature. Qualitative

variables have non-numeric observations, such as colour of hair, although, of course, each

possible non-numeric value may be associated with a numeric frequency.

Continuous/Discrete Categorisation

Variables may be either continuous or discrete. A continuous variable may take any value

between two stated limits (which may possibly be minus and plus infinity). Height, for

example, is a continuous variable, because a person’s height may (with appropriately accurate

equipment) be measured to any minute fraction of a millimetre. A discrete variable, however,

can take only certain values occurring at intervals between stated limits. For most (but not all)

discrete variables, these interval values are the set of integers (whole numbers).

For example, if the variable is the number of children per family, then the only possible

values are 0, 1, 2, ... etc. because it is impossible to have other than a whole number of

children. However, in Ireland, shoe sizes are stated in half-units, and so here we have an

example of a discrete variable which can take the values 1, 11/2, 2, 21/2, etc.

Page 50

BLANK

Page 51

C. REQUIREMENTS OF STATISTICAL DATA

Having decided upon the preliminary matters about the investigation, the statistician must

look in more detail at the actual data to be collected. The desirable qualities of statistical data

are the following:

– Homogeneity

– Completeness

– Accurate definition

– Uniformity.

Homogeneity

The data must be in properly comparable units. “Five houses” means little since five

dwelling houses are very different from five ancestral castles. Houses cannot be compared

unless they are of a similar size or value. If the data is found not to be homogeneous, there

are two methods of adjustment possible.

a) Break down the group into smaller component groups which are homogeneous and

study them separately.

b) Standardise the data. Use units such as “output per man-hour” to compare the output

of two factories of very different size. Alternatively, determine a relationship between

the different units so that all may be expressed in terms of one; in food consumption

surveys, for example, a child may be considered equal to half an adult.

Completeness

Great care must be taken to ensure that no important aspect is omitted from the enquiry.

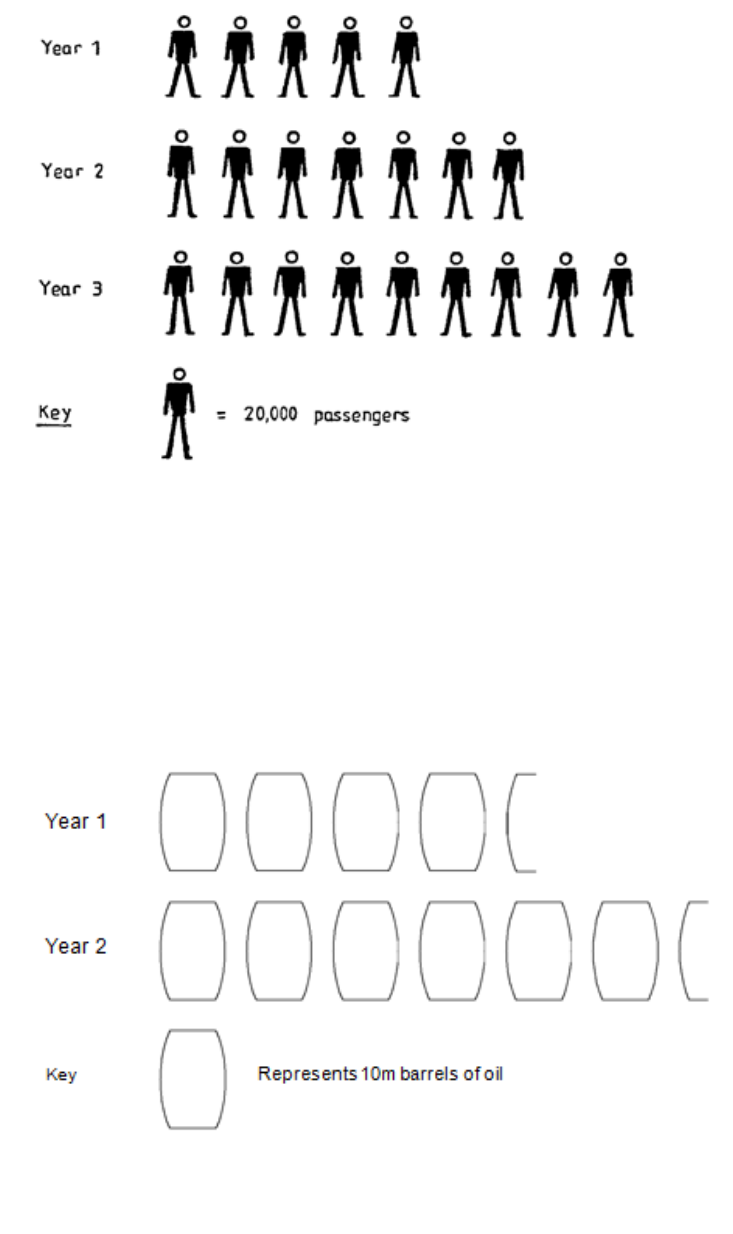

Page 52

Accurate Definition

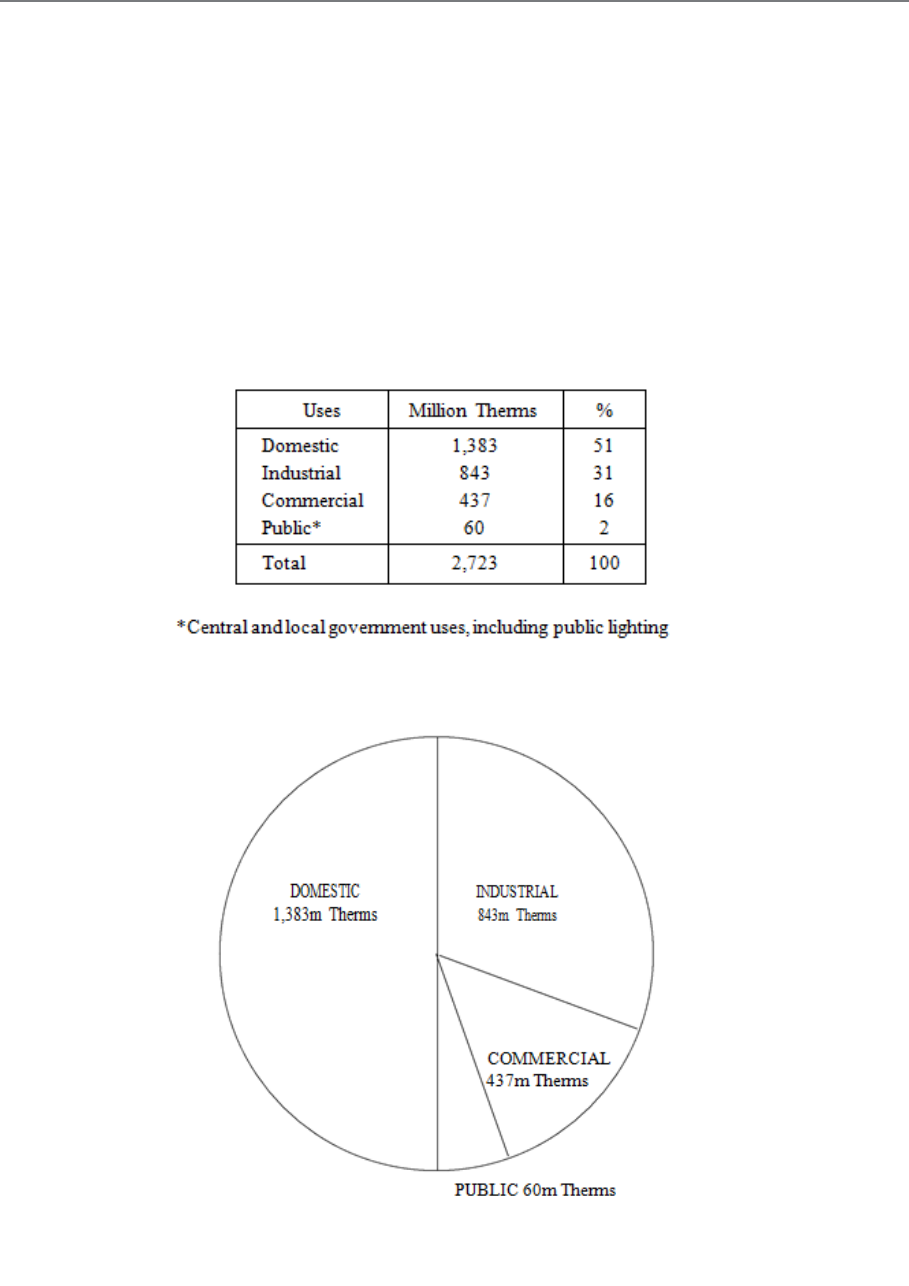

Each term used in an investigation must be carefully defined; it is so easy to be slack about

this and to run into trouble. For example, the term “accident” may mean quite different things

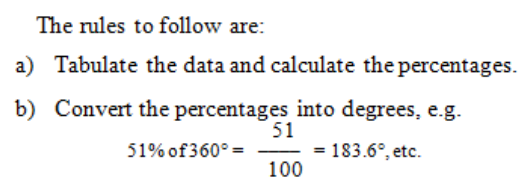

to the injured party, the police and the insurance company! Watch out also, when using other

people’s statistics, for changes in definition. Laws may, for example, alter the definition of an

“indictable offence” or of an “unemployed person”.

Uniformity

The circumstances of the data must remain the same throughout the whole investigation. It is

no use, for example, comparing the average age of workers in an industry at two different

times if the age structure has changed markedly. Likewise, it is not much use comparing a

firm’s profits at two different times if the working capital has changed.

Page 53

D. METHODS OF COLLECTING DATA

When all the foregoing matters have been dealt with, we come to the question of how to

collect the data we require. The methods usually available are as follows:

– Use of published statistics

– Personal investigation/interview

– Delegated personal investigation/interview

– Questionnaire.

Published Statistics

Sometimes we may be attempting to solve a problem that does not require us to collect new

information, but only to reassemble and reanalyse data which has already been collected by

someone else for some other purpose.

We can often make good use of the great amount of statistical data published by

governments, the United Nations, nationalised industries, chambers of trade and commerce

and so on. When using this method, it is particularly important to be clear on the definition of

terms and units and on the accuracy of the data. The source must be reliable and the

information up-to-date.

This type of data is sometimes referred to as secondary data in that the investigator himself

has not been responsible for collecting it and it thus came to him “second-hand”. By contrast,

data which has been collected by the investigator for the particular survey in hand is called

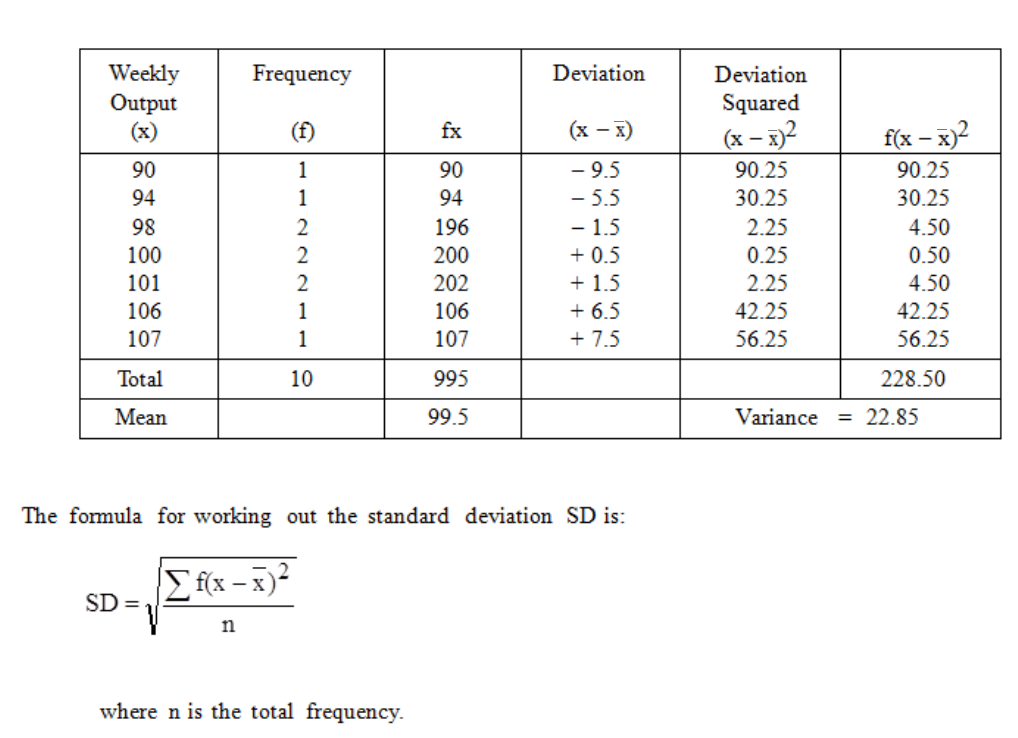

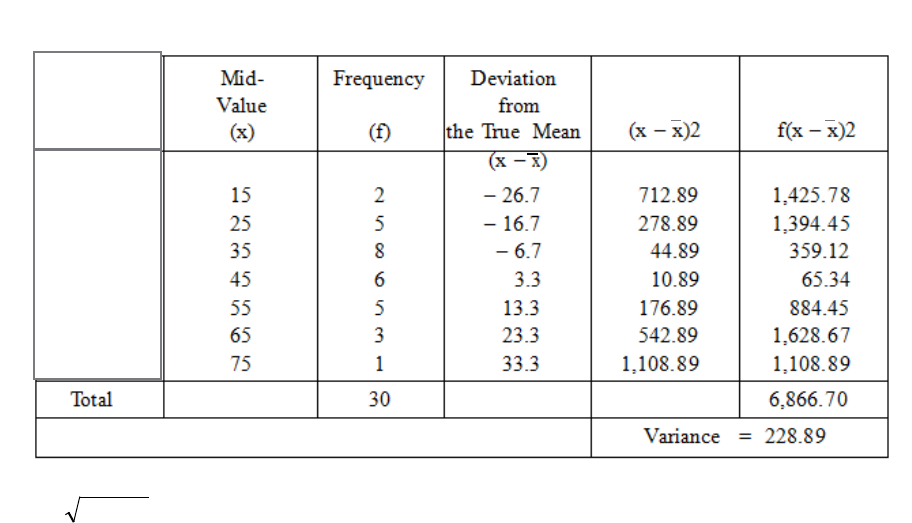

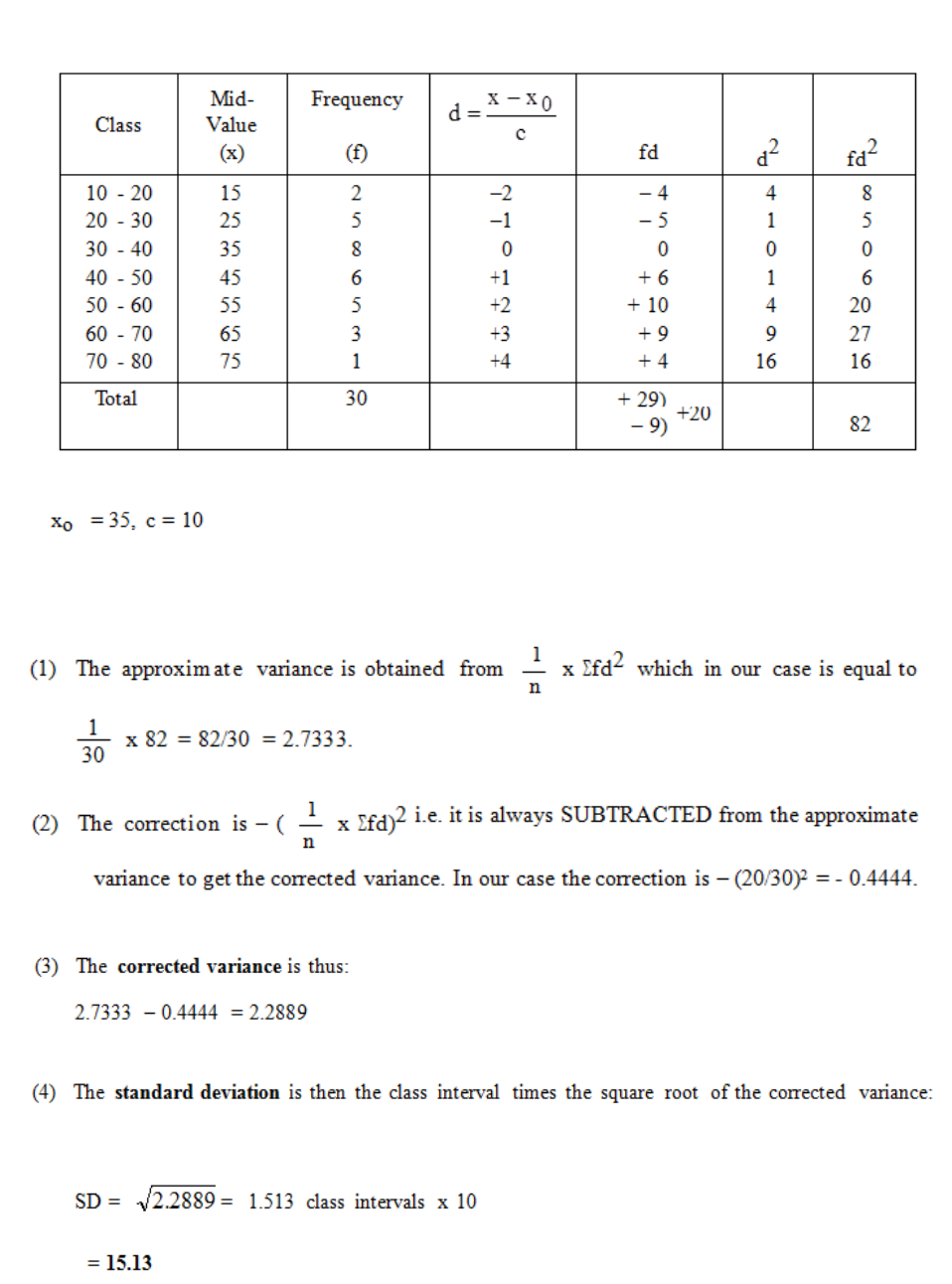

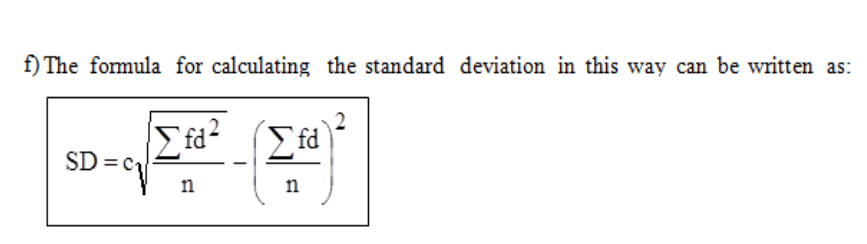

primary data.

The information you require may not be found in one source but parts may appear in several

different sources. Although the search through these may be time-consuming, it can lead to

data being obtained relatively cheaply and this is one of the advantages of this type of data

collection. Of course, the disadvantage is that you could spend a considerable amount of time

looking for information which may not be available.

Another disadvantage of using data from published sources is that the definitions used for

variables and units may not be the same as those you wish to use. It is sometimes difficult to

establish the definitions from published information, but, before using the data, you must

establish what it represent

Page 54

Personal Investigation/Interview

In this method the investigator collects the data himself. The field he can cover is, naturally,

limited. The method has the advantage that the data will be collected in a uniform manner

and with the subsequent analysis in mind. There is sometimes a danger to be guarded against

though, namely that the investigator may be tempted to select data that accords with some of

his preconceived notions.

The personal investigation method is also useful if a pilot survey is carried out prior to the

main survey, as personal investigation will reveal the problems that are likely to occur.

Delegated Personal Investigation/Interview

When the field to be covered is extensive, the task of collecting information may be too great

for one person. Then a team of selected and trained investigators or interviewers may be

used. The people employed should be properly trained and informed of the purposes of the

investigation; their instructions must be very carefully prepared to ensure that the results are

in accordance with the “requirements” described in the previous section of this study unit. If

there are many investigators, personal biases may tend to cancel out.

Care in allocating the duties to the investigators can reduce the risks of bias. For example, if

you are investigating the public attitude to a new drug in two towns, do not put investigator A

to explore town X and investigator B to explore town Y, because any difference that is

revealed might be due to the towns being different, or it might be due to different personal

biases on the part of the two investigators. In such a case, you would try to get both people to

do part of each town.

Questionnaire

In some enquiries the data consists of information which must be supplied by a large number

of people. Then a very convenient way to collect the data is to issue questionnaire forms to

the people concerned and ask them to fill in the answers to a set of printed questions. This

method is usually cheaper than delegated personal investigation and can cover a wider field.

A carefully thought-out questionnaire is often also used in the previous methods of

investigation in order to reduce the effect of personal bias.

Page 55

The distribution and collection of questionnaires by post suffers from two main drawbacks:

a) The forms are completed by people who may be unaware of some of the requirements

and who may place different interpretations on the questions - even the most carefully

worded ones!

b) There may be a large number of forms not returned, and these may be mainly by

people who are not interested in the subject or who are hostile to the enquiry. The

result is that we end up with completed forms only from a certain kind of person and

thus have a biased sample.

It is essential to include a reply-paid envelope to encourage people to respond.

If the forms are distributed and collected by interviewers, a greater response is likely and

queries can be answered. This is the method used, for example, in the Population Census.

Care must be taken, however, that the interviewers do not lead respondents in any way.

Page 56

BLANK

Page 57

E. INTERVIEWING

Advantages of Interviewing

There are many advantages of using interviewers in order to collect information.

The major one is that a large amount of data can be collected relatively quickly and cheaply.

If you have selected the respondents properly and trained the interviewers thoroughly, then

there should be few problems with the collection of the data.

This method has the added advantage of being very versatile since a good interviewer can

adapt the interview to the needs of the respondent. Similarly, if the answers given to the

questions are not clear, then the interviewer can ask the respondent to elaborate on them.

When this is necessary, the interviewer must be very careful not to lead the respondent into

altering rather than clarifying the original answers. The technique for dealing with this

problem must be tackled at the training stage.

This “face-to-face” technique will usually produce a high response rate. The response rate is

determined by the proportion of interviews that are successful.

Another advantage of this method of collecting data is that with a well-designed

questionnaire it is possible to ask a large number of short questions of the respondent in one

interview. This naturally means that the cost per question is lower than in any other method.

Disadvantages of Interviewing

Probably the biggest disadvantage of this method of collecting data is that the use of a large

number of interviewers leads to a loss of direct control by the planners of the survey.

Mistakes in selecting interviewers and any inadequacy of the training programme may not be

recognised until the interpretative stage of the survey is reached. This highlights the need to

train interviewers correctly. It is particularly important to ensure that all interviewers ask

questions in a similar manner. Even with the best will in the world, it is possible that an

inexperienced interviewer, just by changing the tone of his or her voice, may give a different

emphasis to a question than was originally intended.

In spite of these difficulties, this method of data collection is widely used as questions can be

answered cheaply and quickly and, given the correct approach, the technique can achieve

high response rates.

Page 58

BLANK

Page 59

F. DESIGNING THE QUESTIONNAIRE

Principles

A "questionnaire" can be defined as "a formulated series of questions, an interrogatory" and

this is precisely what it is. For a statistical enquiry, the questionnaire consists of a sheet (or

possibly sheets) of paper on which there is a list of questions the answers to which will form

the data to be analysed. When we talk about the "questionnaire method" of collecting data,

we usually have in mind that the questionnaires are sent out by post or are delivered at

people’s homes or offices and left for them to complete. In fact, however, the method is very

often used as a tool in the personal investigation methods already described.

The principles to be observed when designing a questionnaire are as follows:

a) Keep it as short as possible, consistent with getting the right results.

b) Explain the purpose of the investigation so as to encourage people to give the

answers.

c) Individual questions should be as short and simple as possible.

d) If possible, only short and definite answers like "Yes", "No", or a number of some

sort should be called for.

e) Questions should be capable of only one interpretation.

f) There should be a clear logic in the order in which the questions are asked.

g) There should be no leading questions which suggest the preferred answer.

h) The layout should allow easy transfer for computer input.

i) Where possible, use the "alternative answer" system in which the respondent has to

choose between several specified answers.

j) The respondent should be assured that the answers will be treated confidentially and

that the truth will not be used to his or her detriment.

k) No calculations should be required of the respondent.

The above principles should always be applied when designing a questionnaire and, in

addition, you should understand them well enough to be able to remember them all if you are

asked for them in an examination question. They are principles and not rigid rules - often one

has to go against some of them in order to get the right information. Governments can often

ignore these principles because they can make the completion of the questionnaire

compulsory by law, but other investigators must follow the rules as far as practicable in order

Page 60

to make the questionnaire as easy to complete as possible - otherwise they will receive no

replies.

An Example

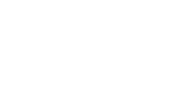

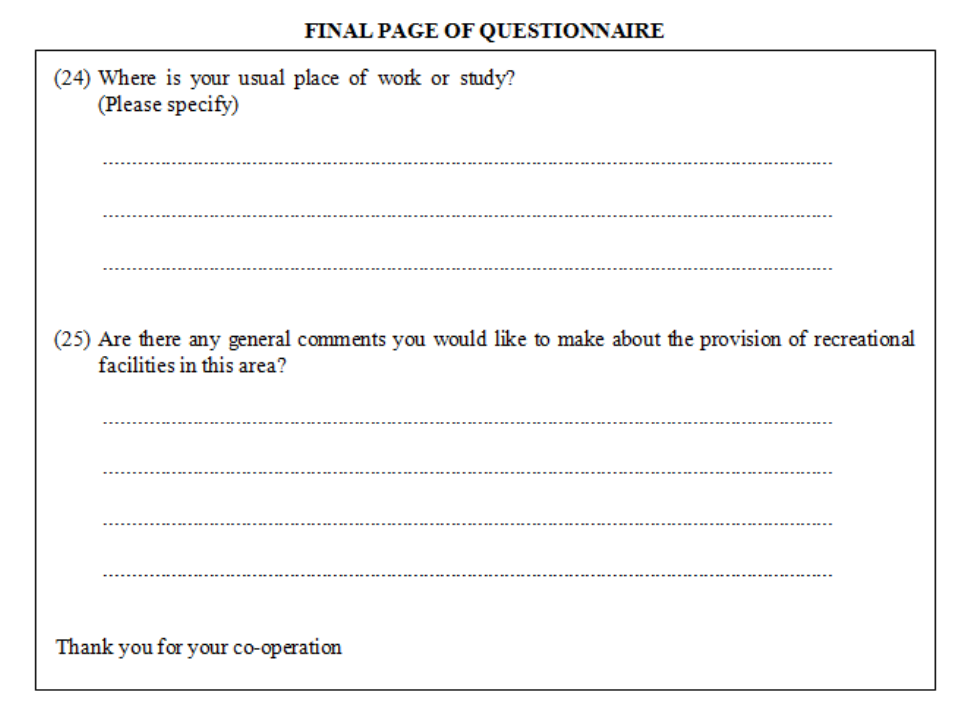

An actual example of a self-completion questionnaire (Figure 7) is now shown as used by an

educational establishment in a research survey. Note that, as the questionnaire is incorporated

in this booklet, it does not give a true format. In practice, the questionnaire was not spread

over so many pages.

Figure 2.1

Page 61

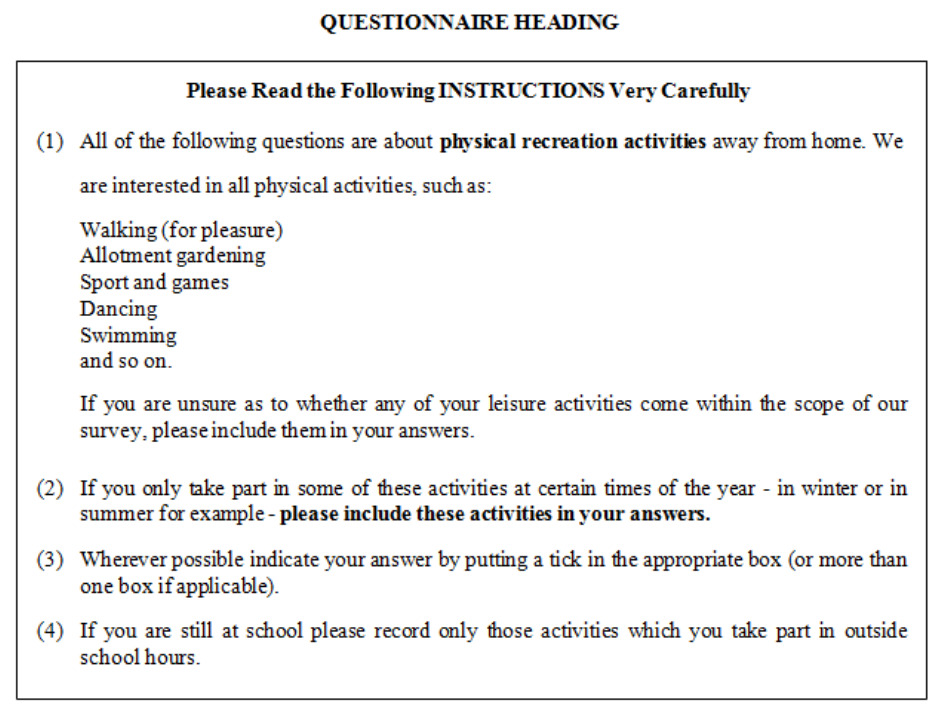

Figure 2.2

Page 62

Figure 2.3

Page 63

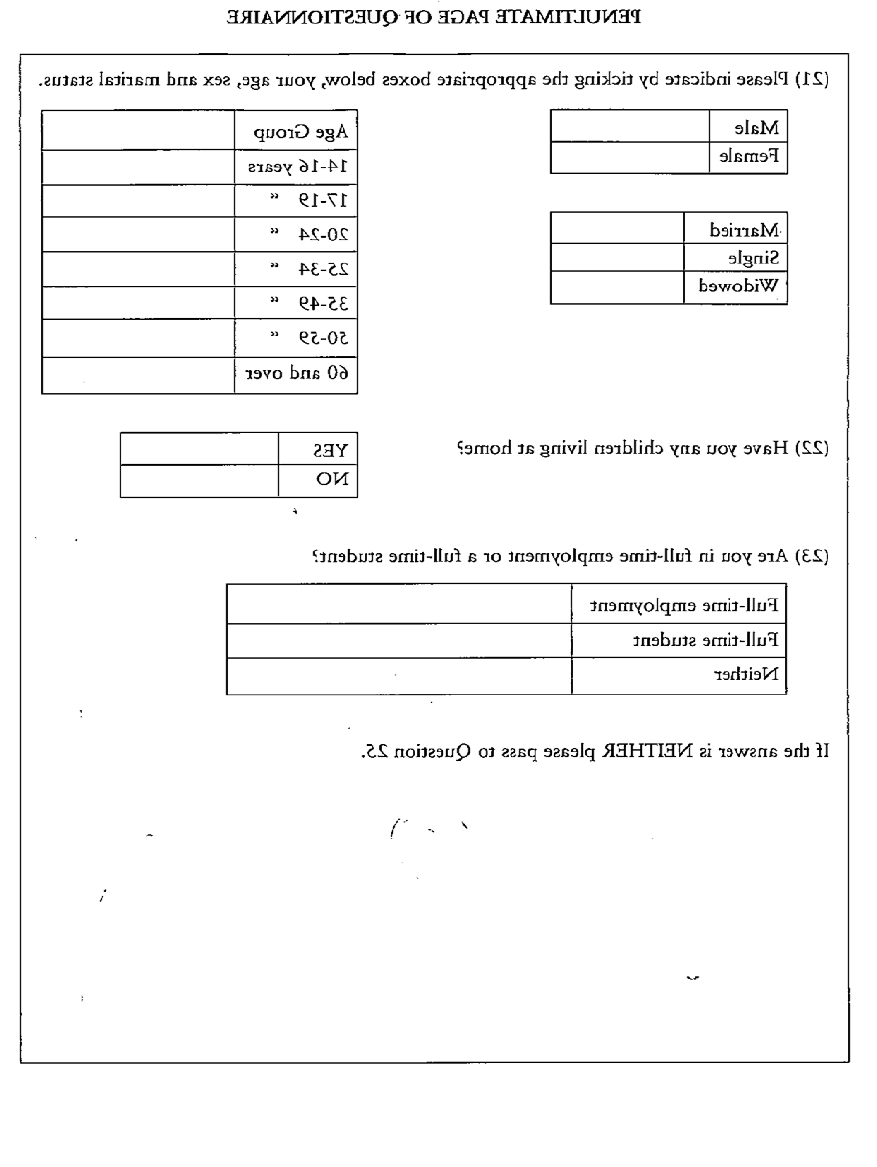

Figure 2.4

Page 64

BLANK

Page 65

G. CHOICE OF METHOD

Choice is difficult between the various methods, as the type of information required will

often determine the method of collection. If the data is easily obtained by automatic methods

or can be observed by the human eye without a great deal of trouble, then the choice is easy.

The problem comes when it is necessary to obtain information by questioning respondents.

The best guide is to ask yourself whether the information you want requires an attitude or

opinion or whether it can be acquired from short yes/no type or similar simple answers. If it is

the former, then it is best to use an interviewer to get the information; if the latter type of data

is required, then a postal questionnaire would be more useful.

Do not forget to check published sources first to see if the information can be found from

data collected for another survey.

Another yardstick worth using is time. If the data must be collected quickly, then use an

interviewer and a short simple questionnaire. However, if time is less important than cost,

then use a postal questionnaire, since this method may take a long time to collect relatively

limited data, but is cheap.

Sometimes a question in the examination paper is devoted to this subject. The tendency is for

the question to state the type of information required and ask you to describe the appropriate

method of data collection giving reasons for your choice.

More commonly, specific definitions and explanations of various terms, such as interviewer

bias, are contained in multi-part questions.

Page 66

BLANK

Page 67

H. PARETO DISTRIBUTION AND THE “80:20” RULE

The Pareto distribution, named after the Italian economist Vilfredo Pareto, is a power law

probability distribution that coincides with social, scientific, geophysical, actuarial, and many

other types of observable phenomena.

Probability density function

Pareto Type I probability density functions for various α (labeled "k") with xm = 1. The horizontal axis is the x

parameter. As α → ∞ the distribution approaches δ(x − xm) where δ is the Dirac delta function

Cumulative distribution function

Pareto Type I cumulative distribution functions for various α(labeled "k") with xm = 1. The

horizontal axis is the x parameter.

Page 68

The “80:20 law”, according to which 20% of all people receive 80% of all income, and 20%

of the most affluent 20% receive 80% of that 80%, and so on, holds precisely when the

Pareto index is a=log4(5) = log (5)/log(4), approximately 1.1161.

Project managers know that 20% of the work consumes 80% of their time and resources.

You can apply the 80/20 rule to almost anything, from science of management to the physical

world.

80% of your sales will come from 20% of your sales staff. 20% of your staff will cause 80%

of your problems, but another 20% of your staff will provide 80% of your production. It

works both ways.

The value of the Pareto Principle for a manager is that it reminds you to focus on the 20%

that matters. Of the things you do during your day, only 20% really matter. Those 20%

produce 80% of your results. Identify and focus on those things.

Page 69

STUDY UNIT 3

Tabulation and Grouping of Data

Contents

Unit

Title

Page

A.

Introduction to Classification and Tabulation of Data

71

Example

71

B.

Forms of Tabulation

75

Simple Tabulation

75

Complex Tabulation

76

C.

Secondary Statistical Tabulation

79

D.

Rules for Tabulation

81

The Rules

81

An Example of Tabulation

82

E.

Sources of Data & Presentation Methods

85

Source, nature, application and use

85

Role of statistics in business analysis and decision making

86

Numerical data

90

Page 70

BLANK

Page 71

A. INTRODUCTION TO CLASSIFICATION AND

TABULATION OF DATA

Having completed the survey and collected the data, we need to organise it so that we can

extract useful information and then present our results. The information will very often

consist of a mass of figures in no very special order. For example, we may have a card index

of the 3,000 workers in a large factory; the cards are probably kept in alphabetical order of

names, but they will contain a large amount of other data such as wage rates, age, sex, type of

work, technical qualifications and so on. If we are required to present to the factory

management a statement about the age structure of the labour force (both male and female),

then the alphabetical arrangement does not help us, and no one could possibly gain any idea

about the topic from merely looking through the cards as they are. What is needed is to

classify the cards according to the age and sex of the worker and then present the results of

the classification as a tabulation. The data in its original form, before classification, is usually

known as “raw data”.

Example

We cannot, of course, give here an example involving 3,000 cards, but you ought now to

follow this “shortened version” involving only a small number of items.

a) Raw Data

15 cards in alphabetical order:

Ayim, L. Mr 39 years

Balewa, W. Mrs 20 “

Buhari, A. Mr 22 “

Boro, W. Miss 22 “

Chahine, S. Miss 32 “

Diop, T. Mr 30 “

Diya, C. Mrs 37 “

Eze, D. Mr 33 “

Egwu, R. Mr 45 “

Gowon, J. Mrs 42 “

Gaxa, F. Miss 24 “

Gueye, W. Mr 27 “

Jalloh, J. Miss 28 “

Jaja, J. Mr 44 “

Jang, L. Mr 39 “

Page 72

b) Classification

(i) According to Sex

Ayim, L. Mr 39 years Balewa, W. Mrs 20 years

Buhari, A. Mr 22 “ Boro, W. Miss 22 “

Diop, T. Mr 30 “ Chahine, S. Miss 32 “

Eze, D. Mr 33 “ Diya. C. Mrs 37 “

Egwu, R. Mr 45 “ Gowon, J. Mrs 42 “

Gueye, W. Mr 27 “ Gaxa, F. Miss 24 “

Jaja, J. Mr 44 “ Jalloh, J. Miss 28 “

Jang, L. Mr 39 “

(ii) According to Age (in Groups)

Balewa, W. Mrs 20 years Ayim, L. Mr 39 years

Buhari, A. Mr 22 “ Chahine, S. Miss 32 “

Boro, W. Miss 22 “ Diop, T. Mr 30 “

Gaxa, F. Miss 24 “ Diya, C. Mrs 37 “

Gueye, W. Mr 27 “ Eze, D. Mr 33 “

Jalloh, J. Miss 28 “ Jang, L. Mr 39 “

Egwu, R. Mr 45 years

Gowon, J. Mrs 42 “

Jaja, J. Mr 44 “

Page 73

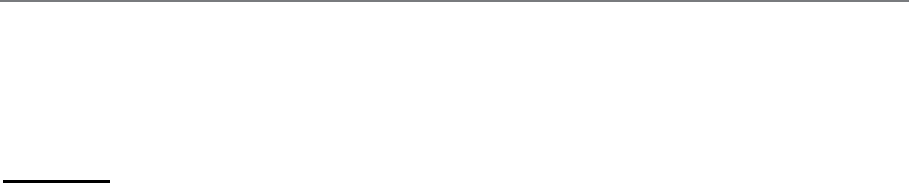

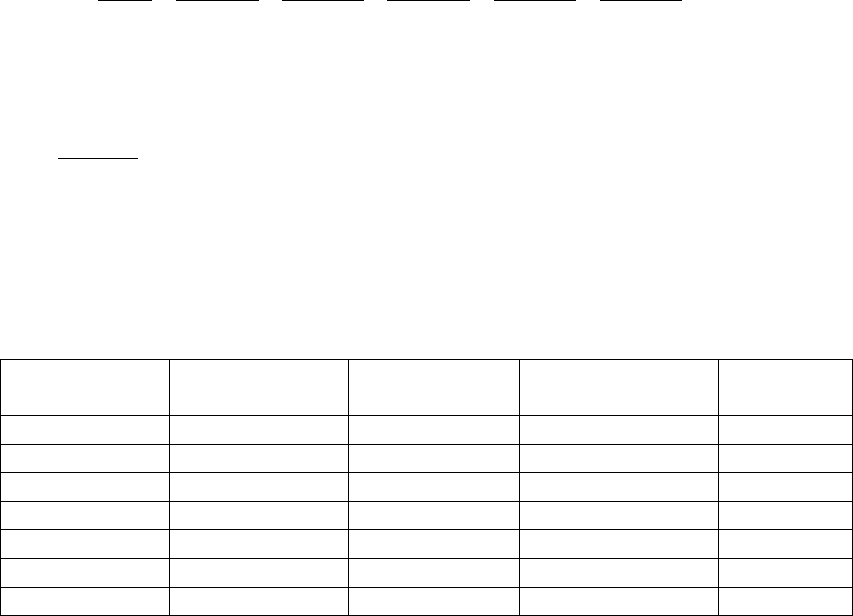

c) Tabulation

The number of cards in each group, after classification, is counted and the results presented in

a table.

Table 3.2

You should look through this example again to make quite sure that you understand what has

been done.

You are now in a position to appreciate the purpose behind classification and tabulation - it is

to condense an unwieldy mass of raw data to manageable proportions and then to present the

results in a readily understandable form. Be sure that you appreciate this point, because

examination questions involving tabulation often begin with a first part which asks, "What

is the object of the tabulation of statistical data?", or words to that effect.

Page 74

BLANK

Page 75

B FORMS OF TABULATION

We classify the process of tabulation into Simple Tabulation and Complex or Matrix

Tabulation.

Simple Tabulation

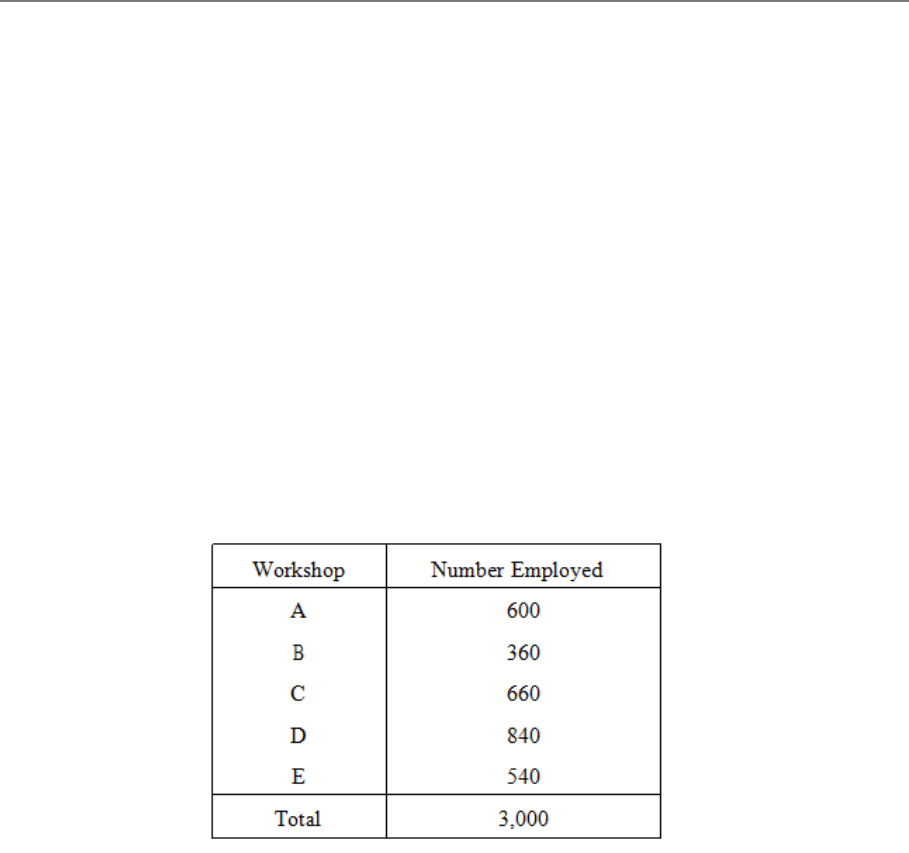

This covers only one aspect of the set of figures. The idea is best conveyed by an example.

Consider the card index mentioned earlier; each card may carry the name of the workshop in

which the person works. A question as to how the labour force is distributed can be answered

by sorting the cards and preparing a simple table thus:

Table 3.3

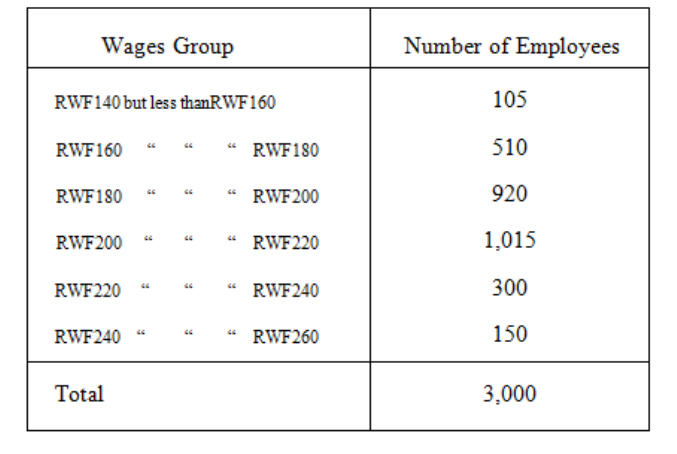

Another question might have been, "What is the wage distribution in the works?", and the

answer can be given in another simple table (see Table 3.4).

Page 76

Table 3.4

Note that such simple tables do not tell us very much - although it may be enough for the

question of the moment.

Complex Tabulation

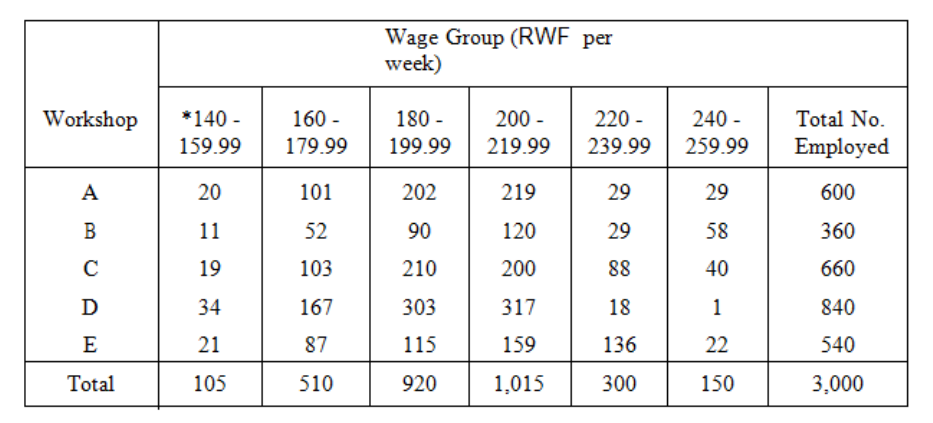

This deals with two or more aspects of a problem at the same time. In the problem just

studied, it is very likely that the two questions would be asked at the same time, and we could

present the answers in a complex table or matrix.

Page 77

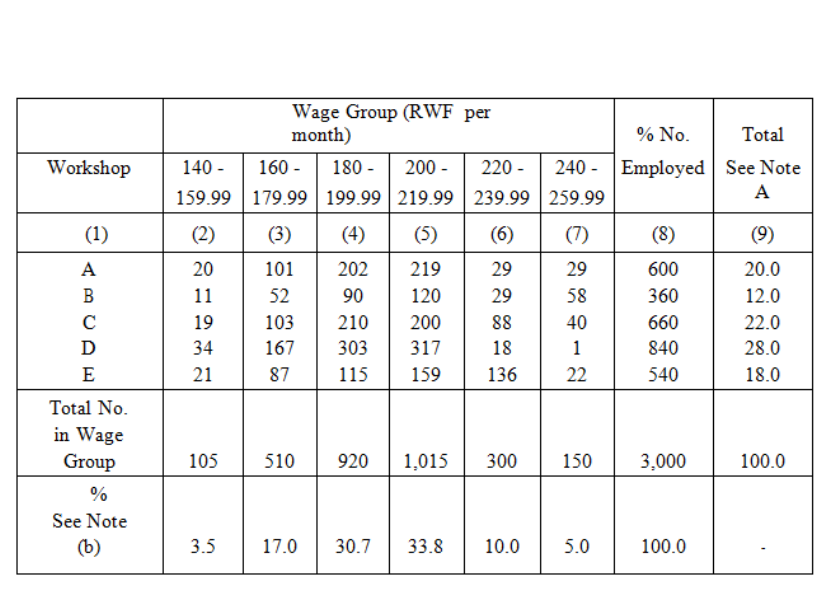

Table 3.5

Note *140 - 159.99 is the same as "140 but less than 160" and similarly for the other

columns.

This table is much more informative than are the two simple tables, but it is more

complicated. We could have divided the groups further into, say, male and female workers, or

into age groups. In a later part of this study unit I will give you a list of the rules you should

try to follow in compiling statistical tables, and at the end of that list you will find a table

relating to our 3,000 workers, which you should study as you read the rules.

Page 78

BLANK

Page 79

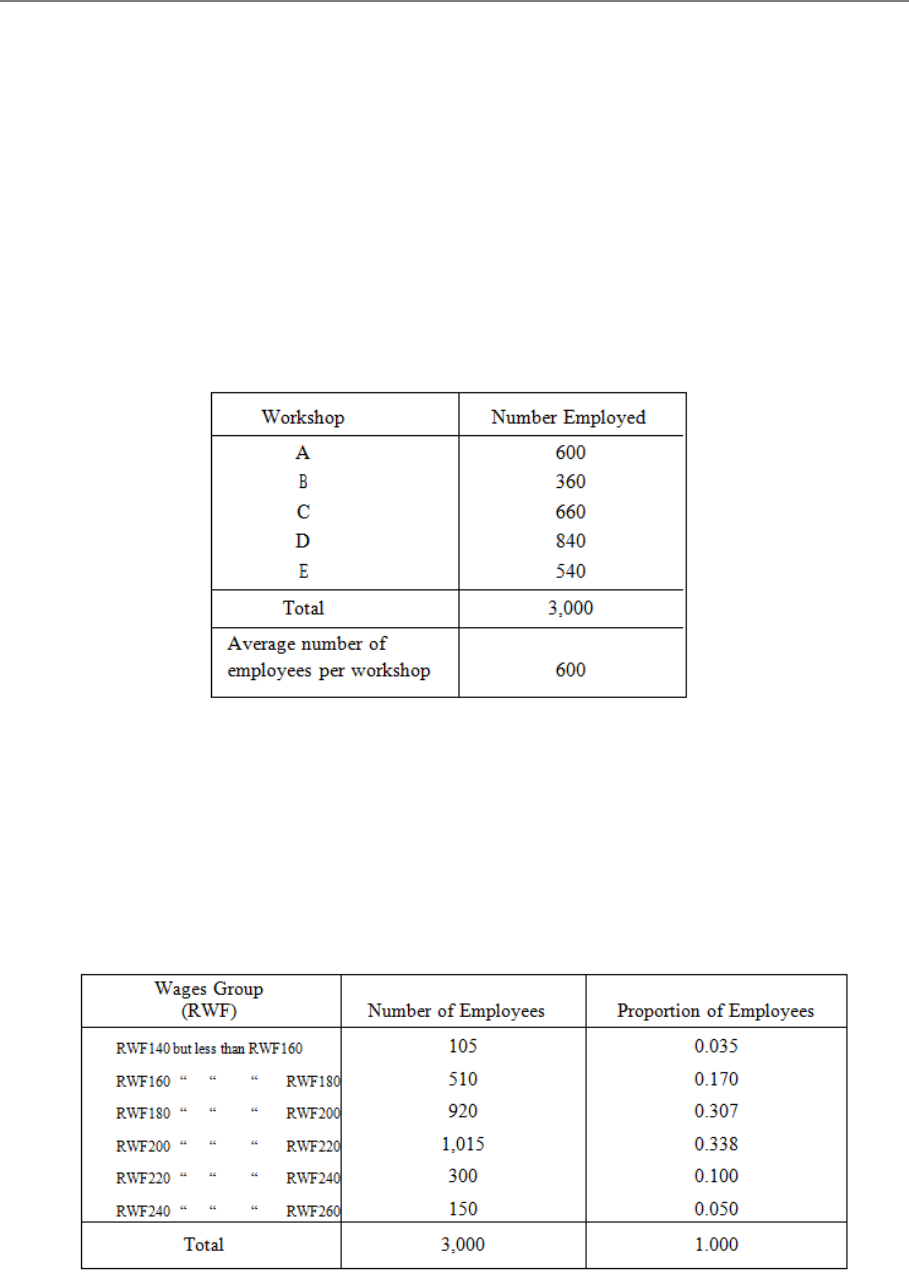

C. SECONDARY STATISTICAL TABULATION

So far, our tables have merely classified the already available figures, the primary statistics,

but we can go further than this and do some simple calculations to produce other figures,

secondary statistics. As an example, take the first simple table illustrated above, and calculate

how many employees there are on average per workshop. This is obtained by dividing the

total (3,000) by the number of shops (5), and the table appears thus:

Table 3.6

This average is a "secondary statistic". For another example, we may take the second simple

table given above and calculate the proportion of workers in each wage group, thus:

Table 3.7

Page 80

These proportions are "secondary statistics". In commercial and business statistics, it is more

usual to use percentages than proportions; in the above tables these would be 3.5%, 17%,

30.7%, 33.8%, 10% and 5%.

Secondary statistics are not, of course, confined to simple tables, they are used in complex

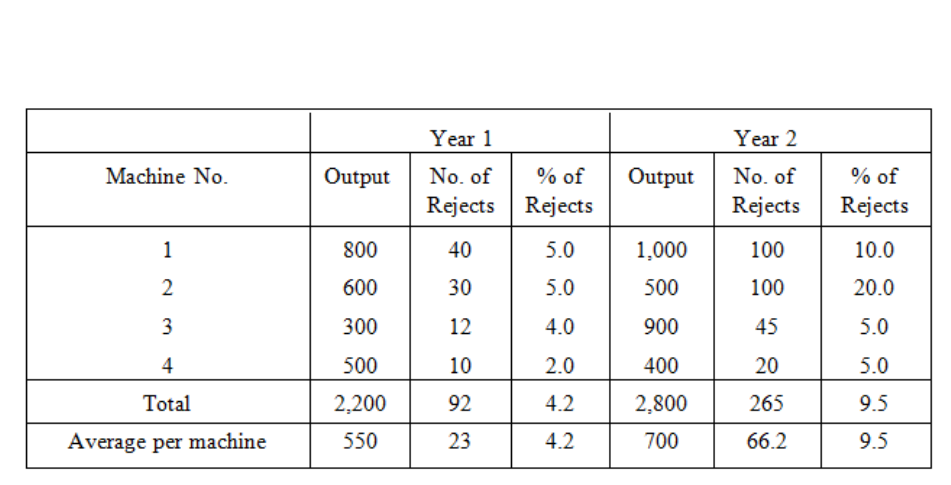

tables too, as in this example:

The percentage columns and the average line show secondary statistics. All the other figures

are primary statistics.

Note carefully that percentages cannot be added or averaged to get the percentage of a

total or of an average. You must work out such percentages on the totals or averages

themselves.

Another danger in the use of percentages has to be watched, and that is that you must not

forget the size of the original numbers. Take, for example, the case of two doctors dealing

with a certain disease. One doctor has only one patient and he cures him - 100% success! The

other doctor has 100 patients of whom he cures 80 - only 80% success! You can see how very

unfair it would be on the hard-working second doctor to compare the percentages alone.

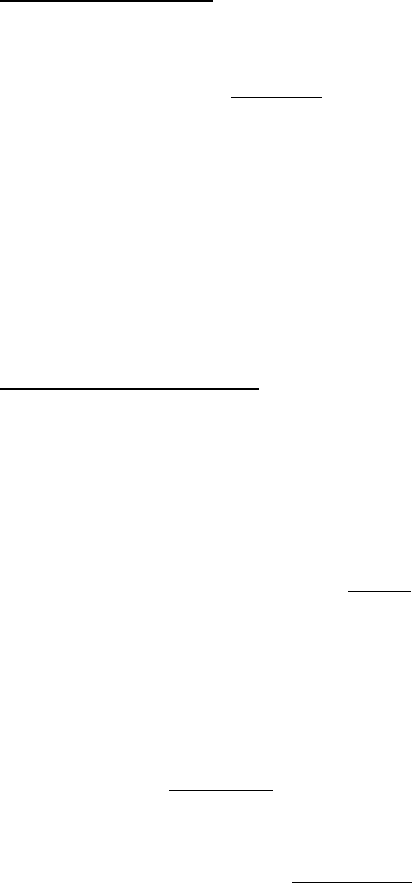

Table 3.8: Inspection Results for a Factory

Product in Two Successive Years

Page 81

D. RULES FOR TABULATION

The Rules

There are no absolute rules for drawing up statistical tables, but there are a few general

principles which, if borne in mind, will help you to present your data in the best possible way.

Here they are:

a) Try not to include too many features in any one table (say, not more than four or five)

as otherwise it becomes rather clumsy. It is better to use two or more separate tables.

b) Each table should have a clear and concise title to indicate its purpose.

c) It should be very clear what units are being used in the table (tonnes, RWF, people,

RWF000, etc.).

d) Blank spaces and long numbers should be avoided, the latter by a sensible degree of

approximation.

e) Columns should be numbered to facilitate reference.

f) Try to have some order to the table, using, for example, size, time, geographical

location or alphabetical order.

g) Figures to be compared or contrasted should be placed as close together as possible.

h) Percentages should be pleased near to the numbers on which they are based.

i) Rule the tables neatly - scribbled tables with freehand lines nearly always result in

mistakes and are difficulty to follow. However, it is useful to draw a rough sketch first

so that you can choose the best layout and decide on the widths of the columns.

j) Insert totals where these are meaningful, but avoid "nonsense totals". Ask yourself

what the total will tell you before you decide to include it. An example of such a

"nonsense total" is given in the following table:

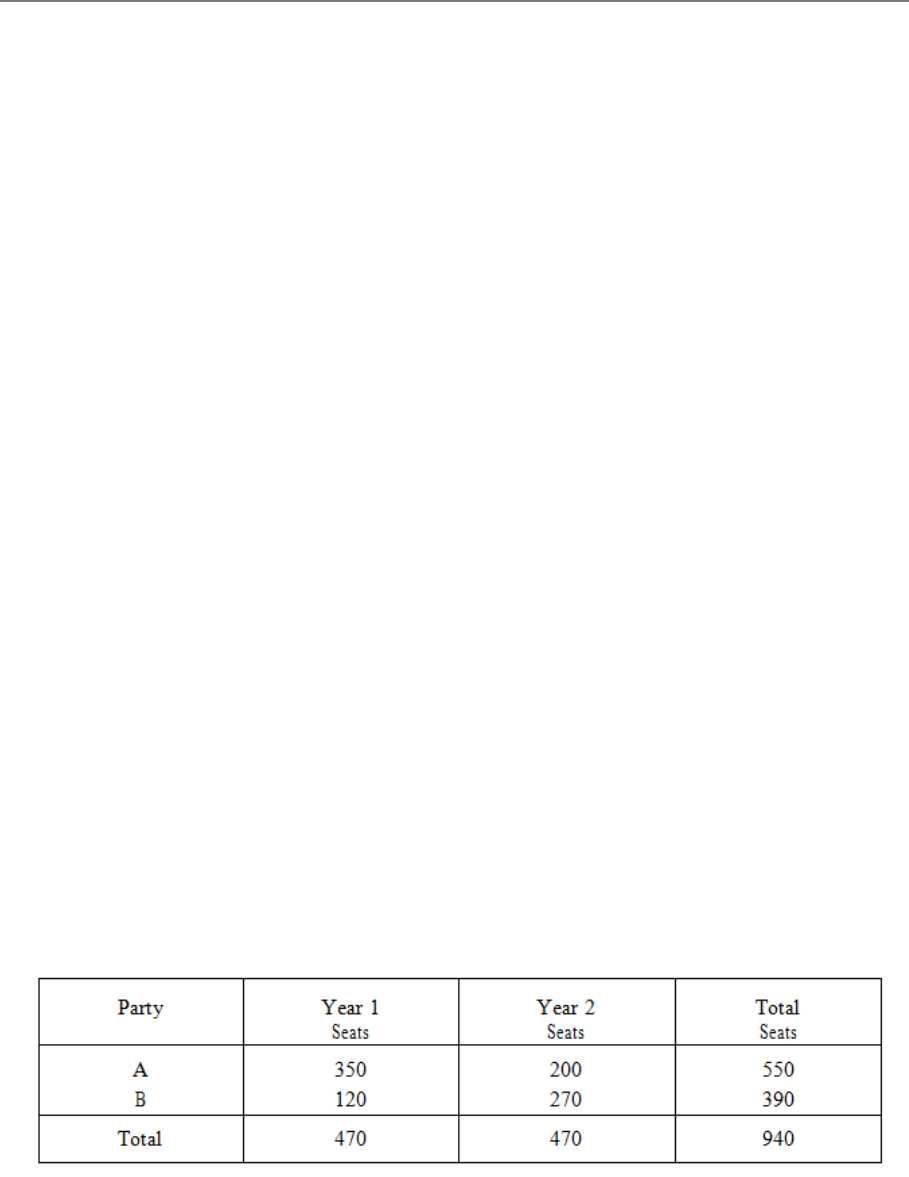

Table 3.9 : Election Results

Page 82

The totals (470) at the foot of the two columns make sense because they tell us the total

number of seats being contested, but the totals in the final column (550, 390, 940) are

"nonsense totals" for they tell us nothing of value.

k) If numbers need to be totalled, try to place them in a column rather than along a row

for easier computation.

l) If you need to emphasise particular numbers, then underlining, significant spacing or

heavy type can be used. If data is lacking in a particular instance, then insert an

asterisk (*) in the empty space and give the reasons for the lack of data in a footnote.

m) Footnotes can also be used to indicate, for example, the source of secondary data, a

change in the way the data has been recorded, or any special circumstances which

make the data seem odd.

An Example of Tabulation

It is not always possible to obey all of these rules on any one occasion, and there may be

times when you have a good reason for disregarding some of them. But only do so if the

reason is really good - not just to save you the bother of thinking! Study now the layout of the

following table (based on our previous example of 3,000 workpeople) and check through the

list of rules to see how they have been applied.

Table 3.10: ABC & Co. Wage Structure of Labour Force Numbers

of Persons in Specified Categories

Page 83

Note (a) Total no. employed in workshop as a percentage of the total workforce.

Note (b) Total no. in wage group as a percentage of the total workforce.

Table 3.10 can be called a "twofold" table as the workforce is broken down by wage and

workshop.

Page 84

BLANK

Page 85

E. SOURCES OF DATA AND PRESENTATION

METHODS

Sources, nature, application and use:

Sources

Data is generally found through research or as the result of a survey. Data which is found

from a survey is called primary data; it is data which is collected for a particular reason or

research project. For example, if your firm wished to establish how much money tourists

spend on cultural events when they come to Rwanda or how long a particular process takes

on average to complete in a factory. In this case the data will be taken in raw form, i.e. lots of

figures and then analysed by grouping the data into more manageable groups. The other

source of data is secondary data. This is data which is already available (government

statistics, company reports etc). As a business person you can take these figures and use them

for whatever purpose you require.

Nature of data.

Data is classified according to the type of data it is. The classifications are as follows:

Categorical data: example: Do you currently own any stocks or bonds? Yes No

This type of data is generally plotted using a bar chart or pie chart.

Numerical data: This is usually divided into discrete or continuous data.

How many cars do you own? This is discrete data. This is data that arises from a counting

process.

How tall are you? This is continuous data. This is data that arises from a measuring process.

Or the figures cannot be measured precisely. For example: clock in times of the workers in a

particular shift: 8:23; 8:14; 8:16....

Whether data is discrete or continuous will determine the most appropriate method of

presentation.

Page 86

Descriptive

statistics

collecting

presenting

analysing

Precaution in use.

As a business person it is important that you are cautions when reading data and statistics. In

order to draw intelligent and logical conclusions from data you need to understand the

various meanings of statistical terms.

Role of statistics in business analysis and decision making.

In the business world, statistics has four important applications:

– To summarise business data

– To draw conclusions from that data

– To make reliable forecasts about business activities

– To improve business processes.

The field of statistics is generally divided into two areas.

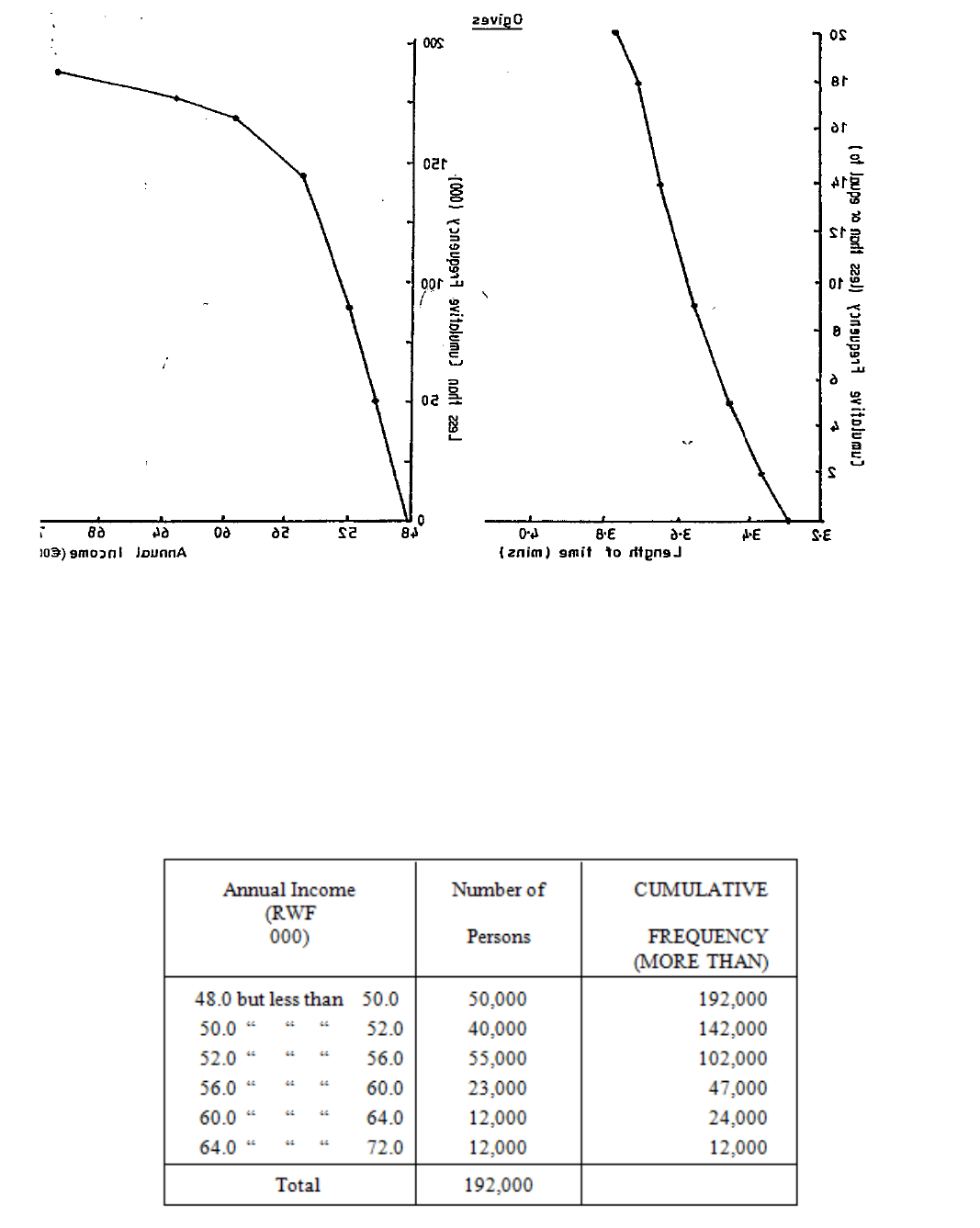

Figure 3.1

Descriptive statistics allows

you to create different tables

and charts to summarise data.

It also provides statistical

measures such as the mean,

median, mode, standard

deviation etc to describe

different characteristics of the

data

Page 87

Inferential

statistics

sampling

estimation

from

sample

informed

opinion

Figure 3.2

Improving business processes involves using managerial approaches that focus on quality

improvements such as Six Sigma. These approaches are data driven and use statistical

method to develop these models.

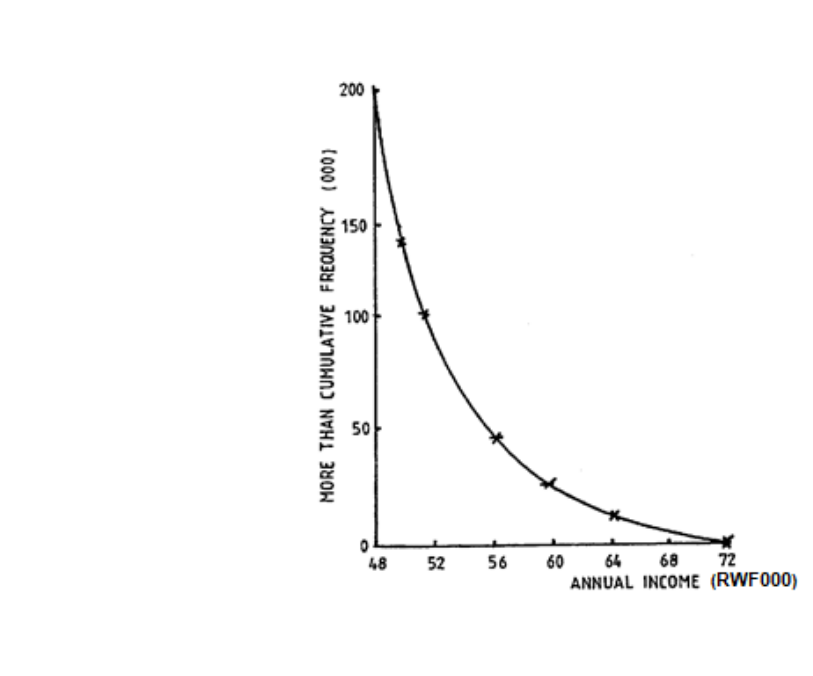

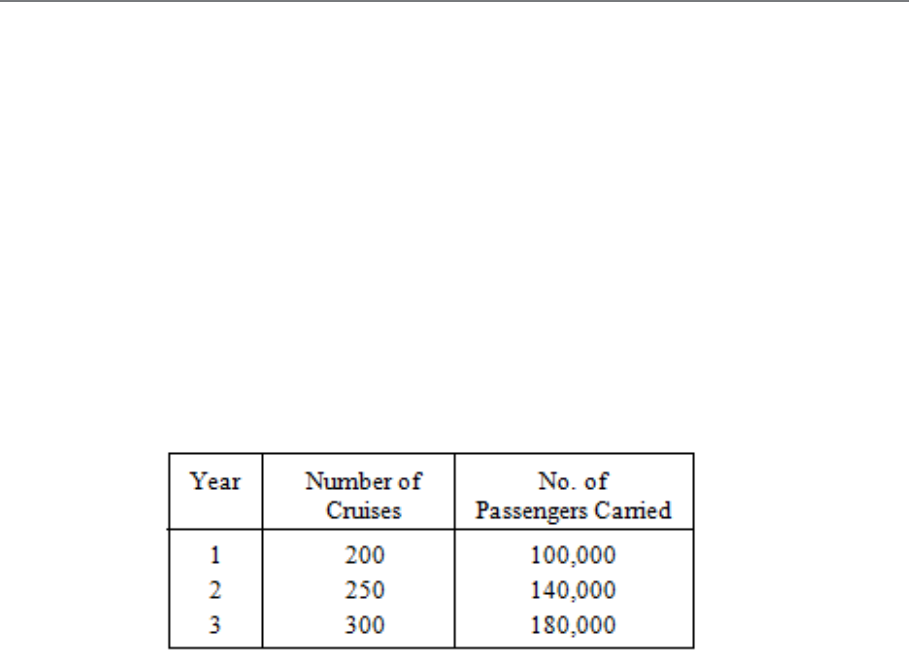

– Presentation of data, use of bar charts, histograms, pie charts, graphs, tables,

frequency distributions, cumulative distributions, Ogives.

– Their uses and interpretations.

If you look at any magazine or newspaper article, TV show, election campaign etc you will

see many different charts depicting anything from the most popular holiday destination to the

gain in company profits. The nice thing about studying statistics is that once you understand

the concepts the theory remains the same for all situations and you can easily apply your

knowledge to whatever situation you are in.

Tables and charts for categorical data:

When you have categorical data, you tally responses into categories and then present the

frequency or percentage in each category in tables and charts.

The summary table indicates the frequency, amount or percentage of items in each category,

so that you can differentiate between the categories.

Supposing a questionnaire asked people how they preferred to do their banking:

Drawing conclusions about

your data is the fundamental

point of inferential statistics.

Using these methods allows the

researcher to draw conclusions

based on data rather than on

intuition.

Page 88

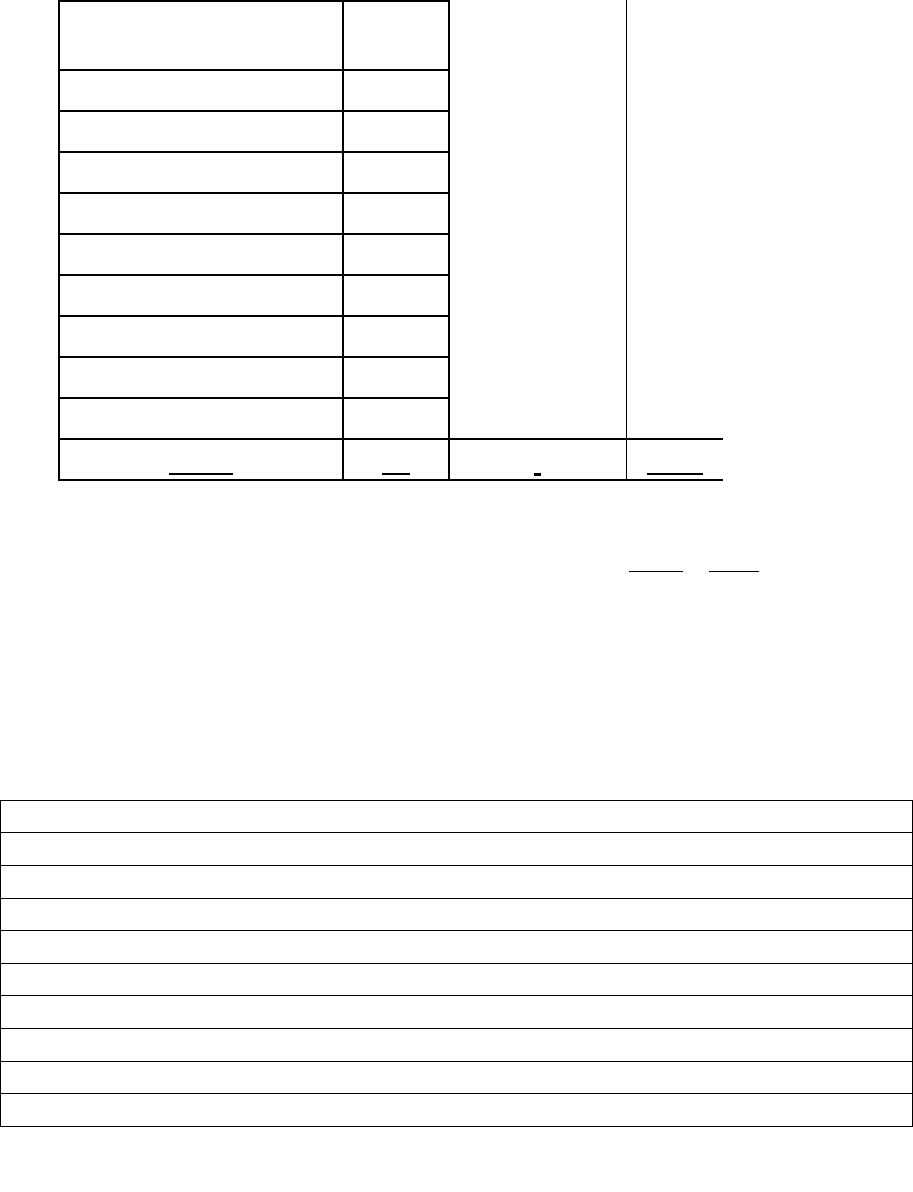

Table 3.11

Banking preference

frequency

percentage

In bank

200

20

ATM

250

25

Telephone

97

10

internet

450

45

Total

997

100

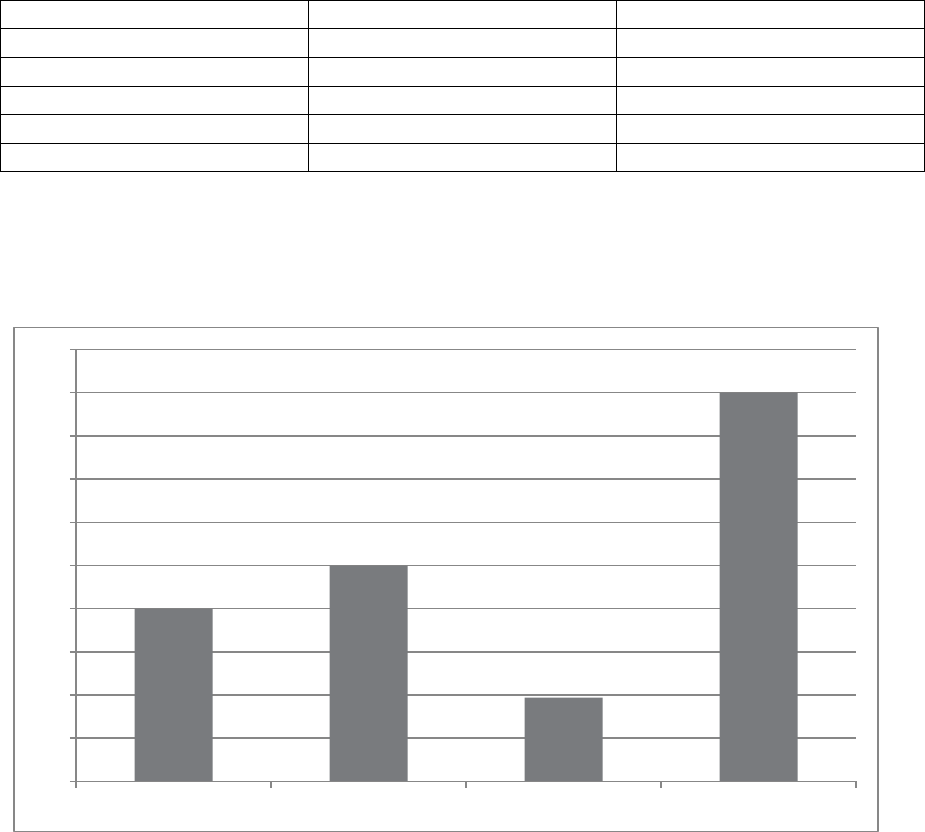

The above information could be illustrated using a bar chart

Figure 3.3

0

50

100

150

200

250

300

350

400

450

500

In bank ATM Telephone Internet

Page 89

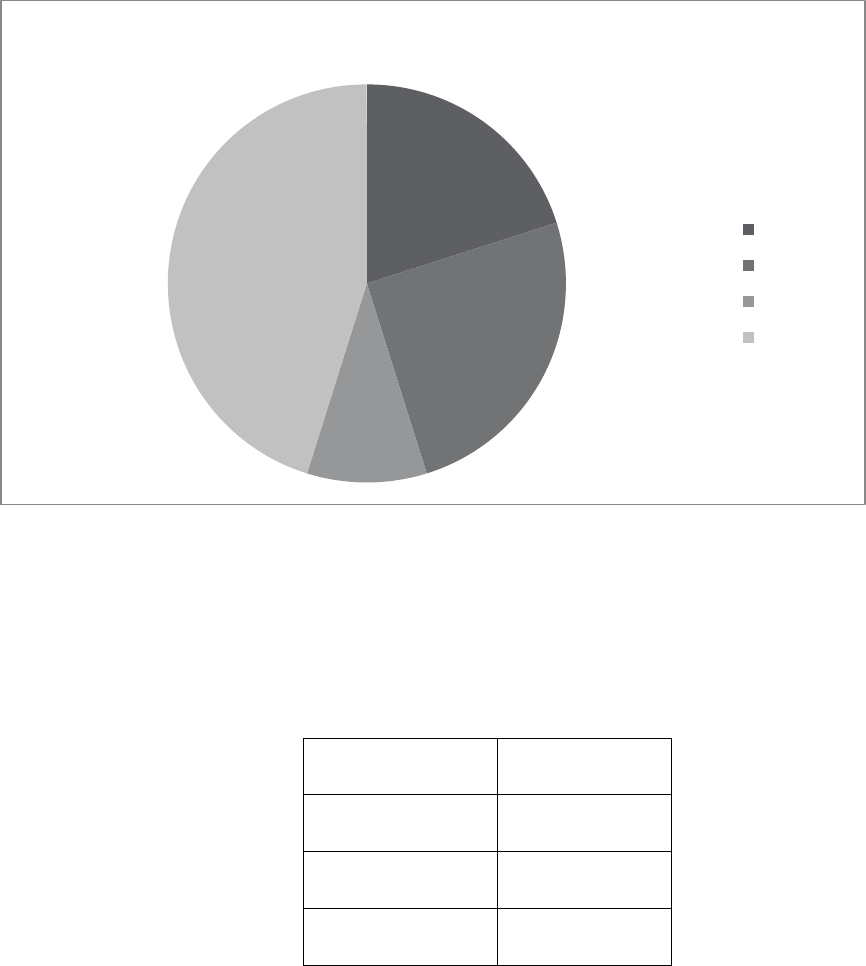

Or a pie chart

Figure 3.4

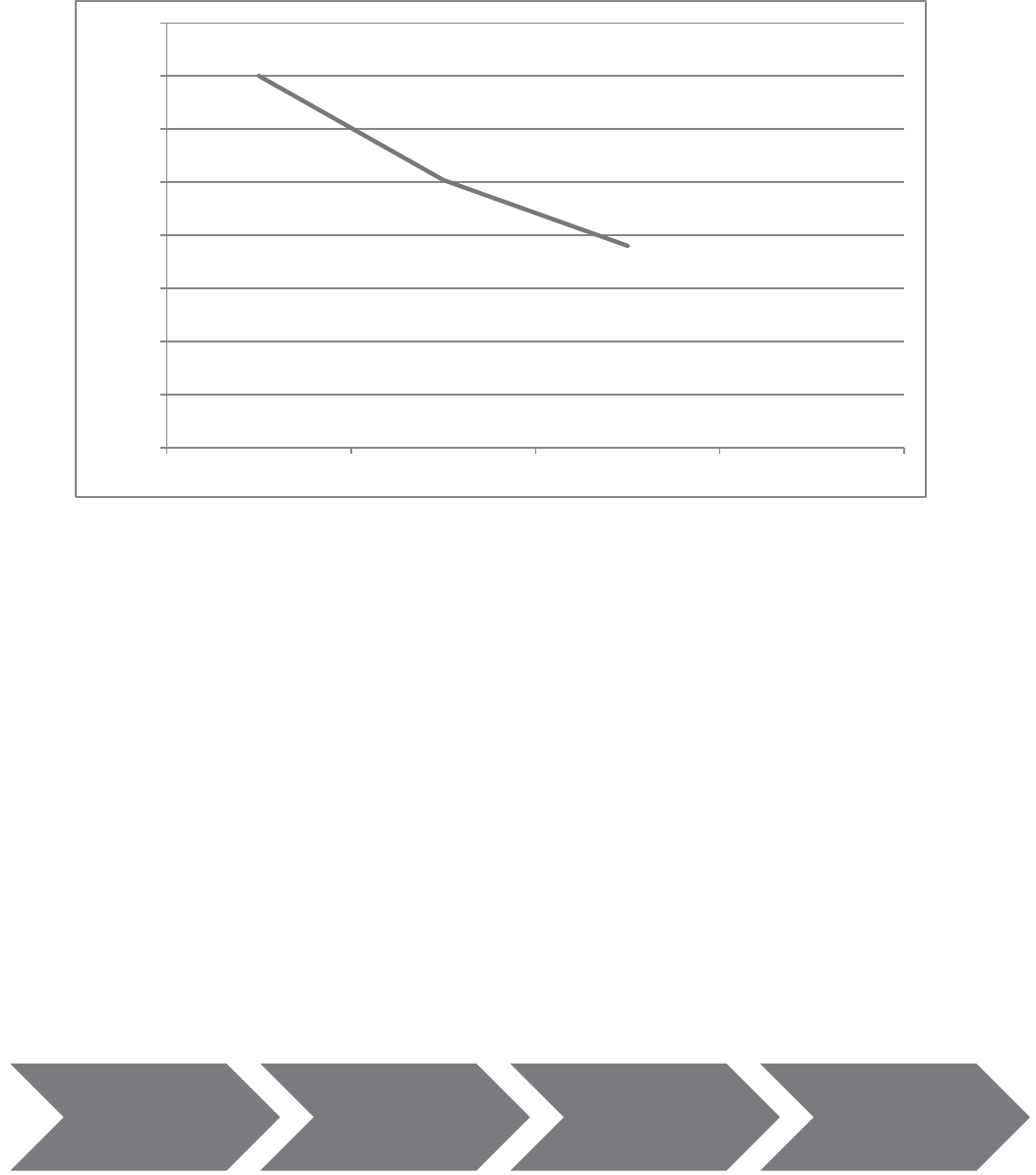

A simple line chart is usually used for time series data, where data is given over time.

The price of an average mobile homes over the past 3 years

Table 3.12

Year

Price RWF

2008

RWF350 000

2009

RWF252 000

2010

RWF190 000

Sales

In bank

ATM

Telephone

Internet

Page 90

Raw data Grouped

Illustrated

using histogram,

ogive.

Figure 3.5

The above graphs are used for categorical data.

Numerical Data

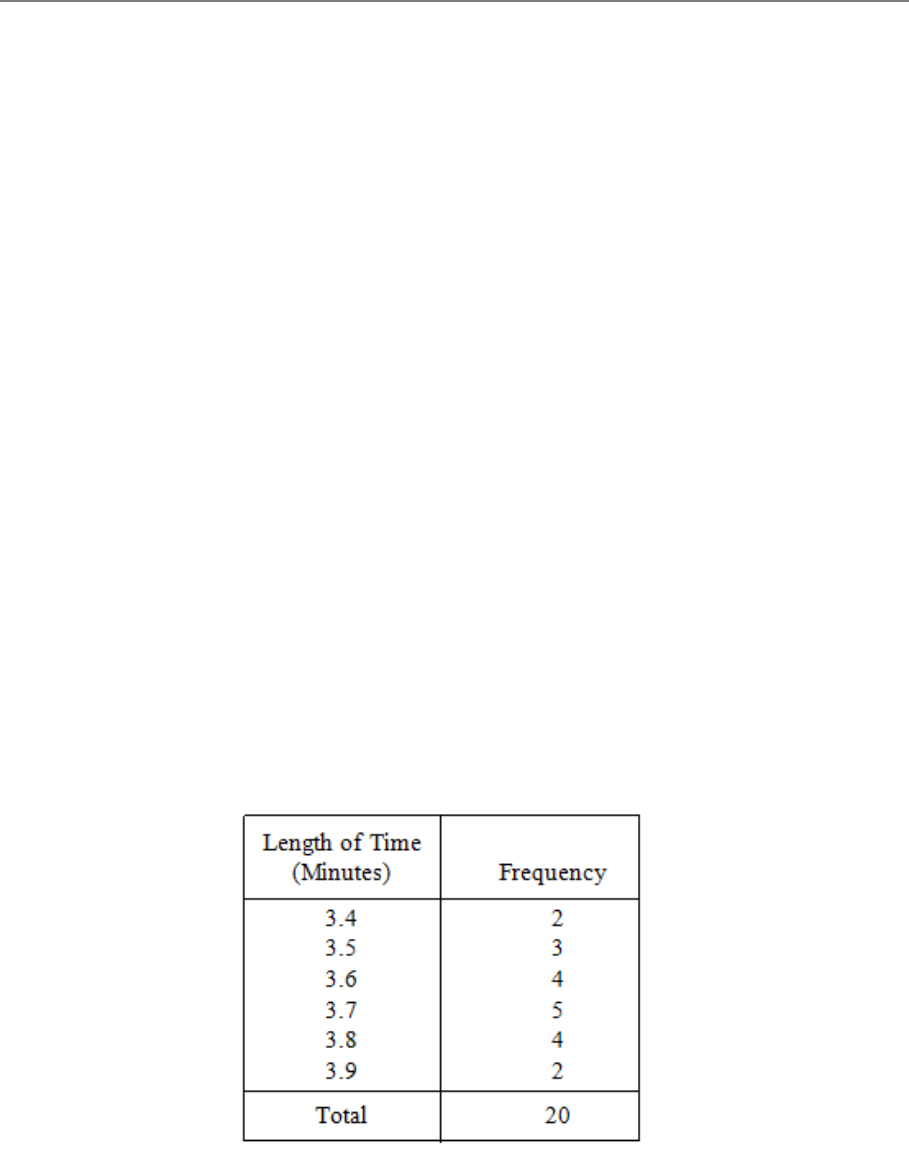

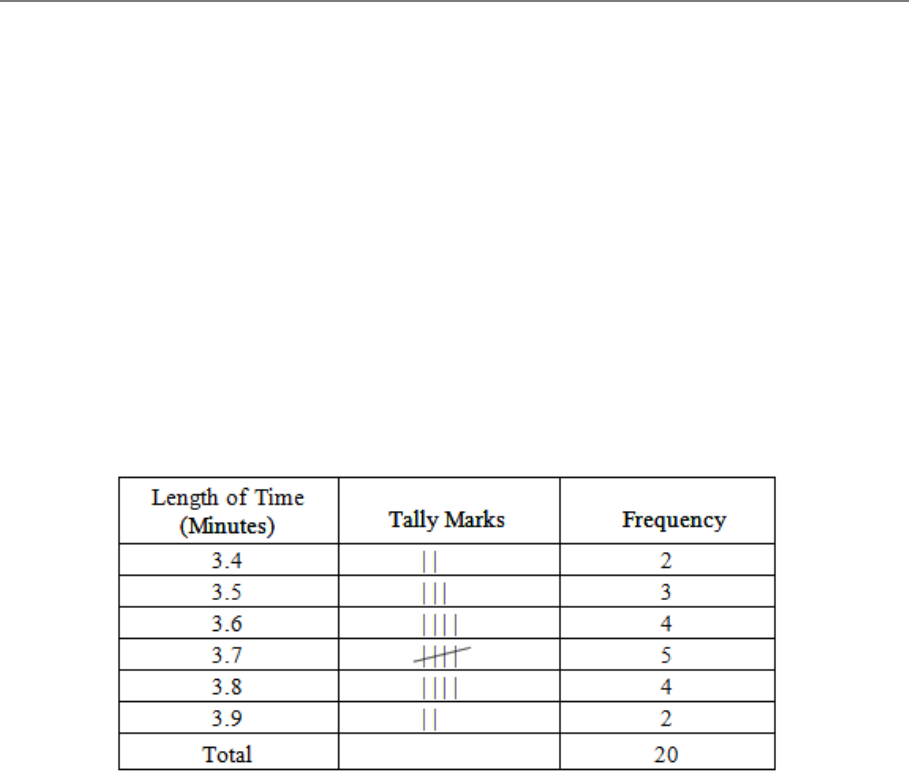

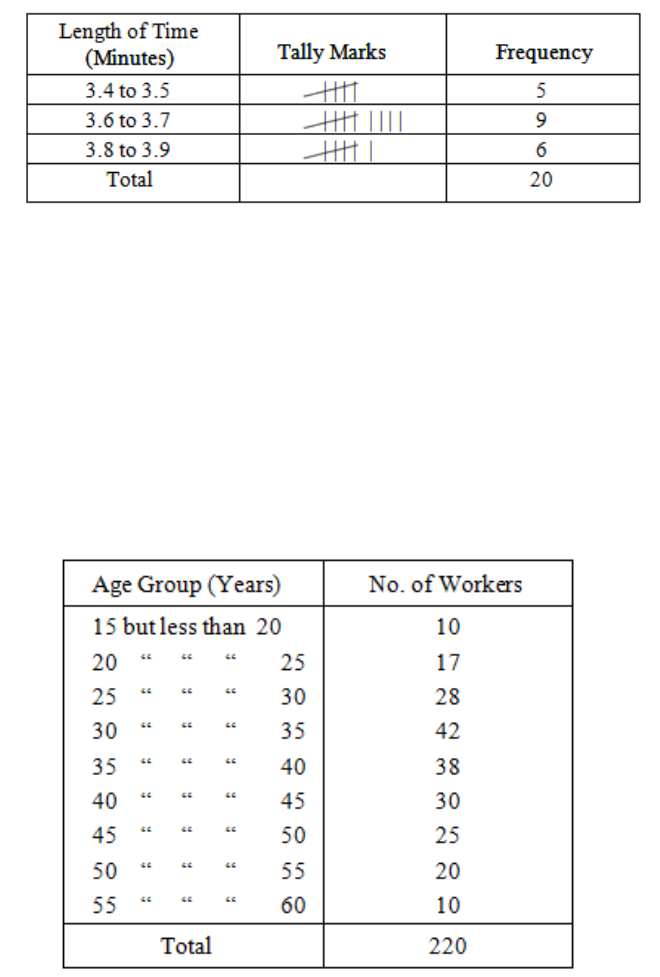

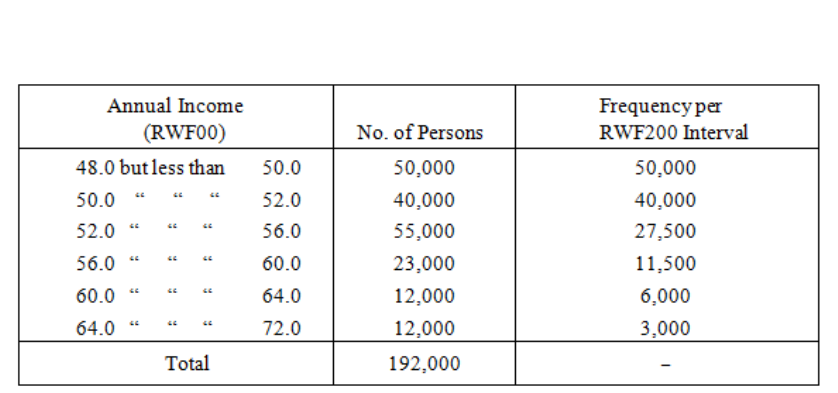

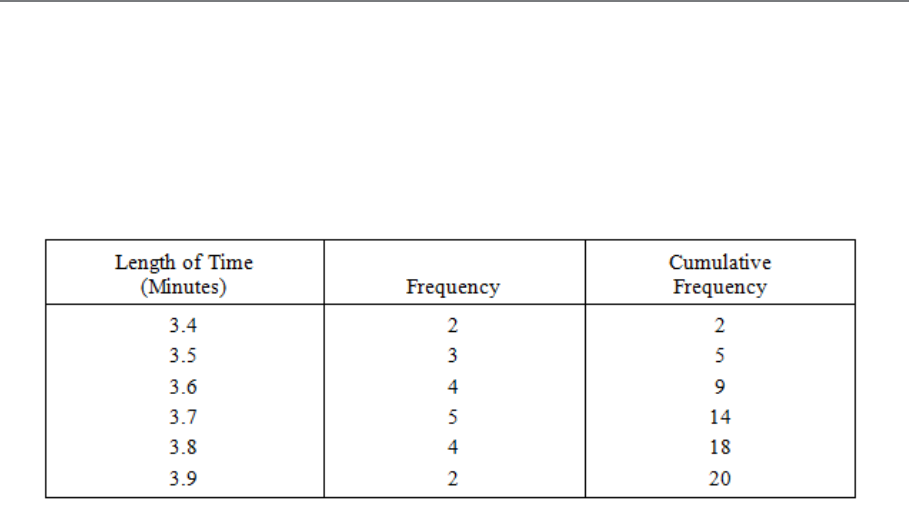

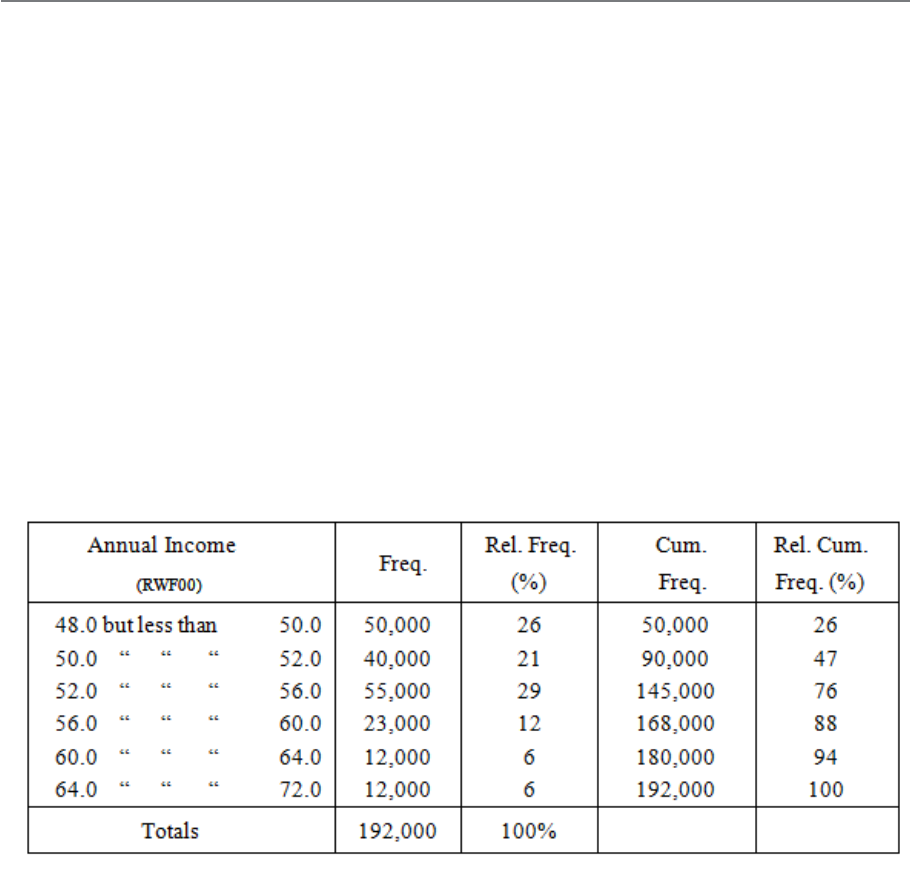

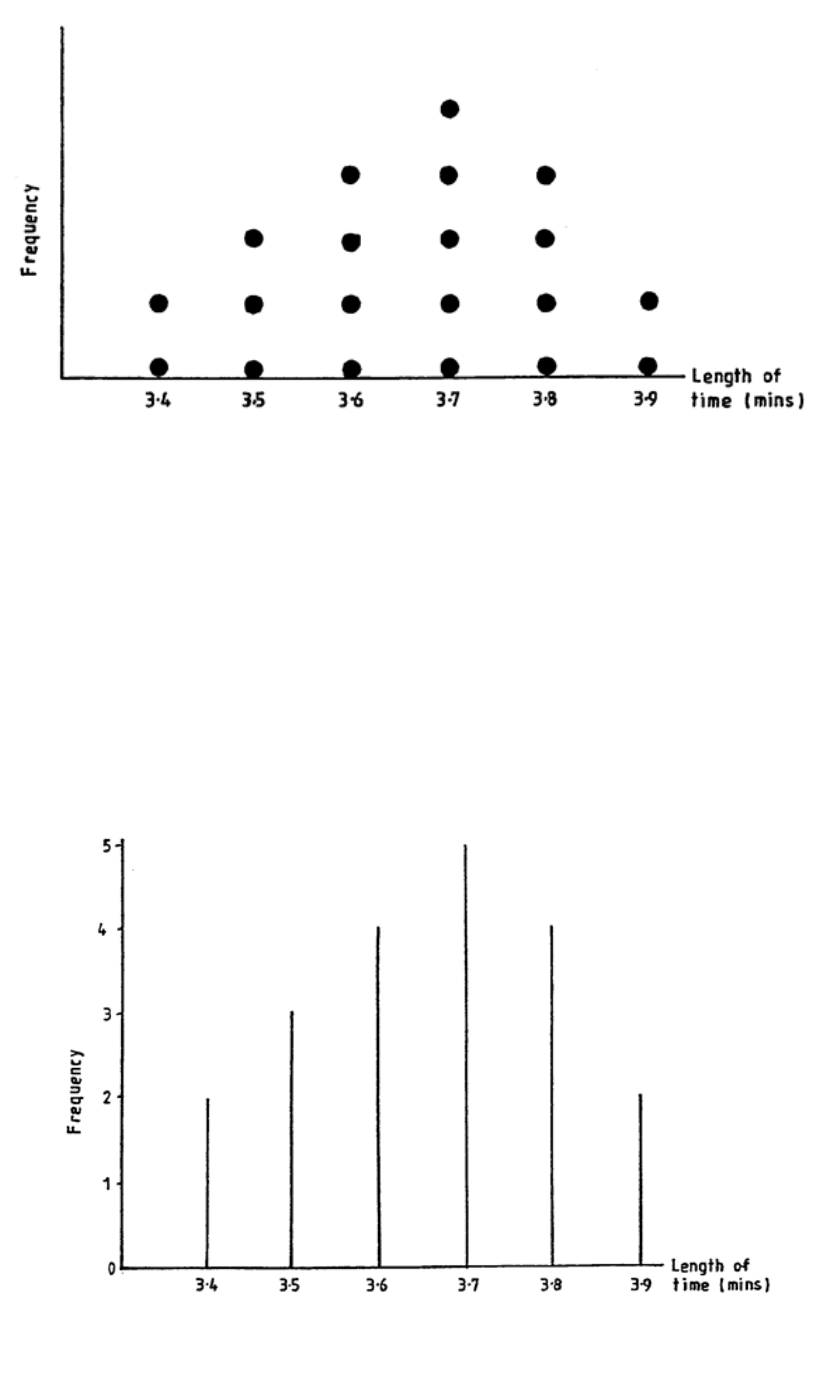

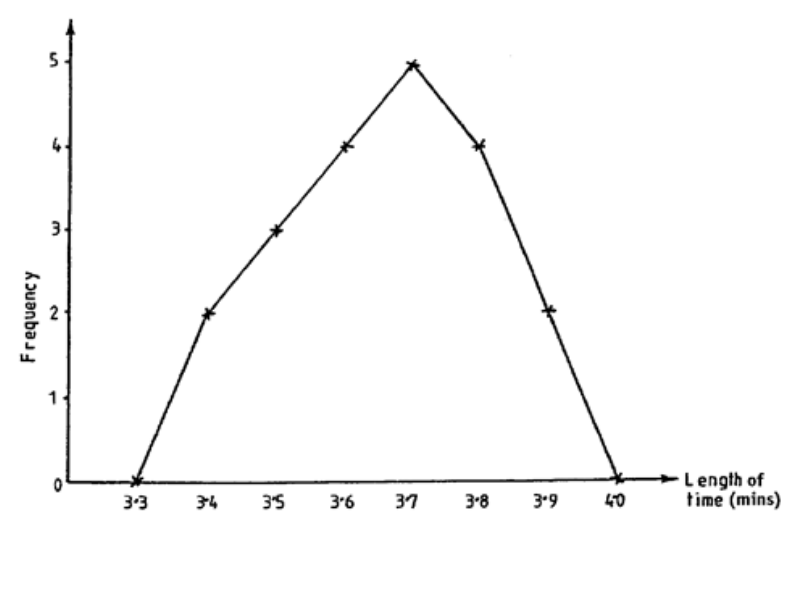

Numerical data is generally used more in statistics. The process in which numerical data is

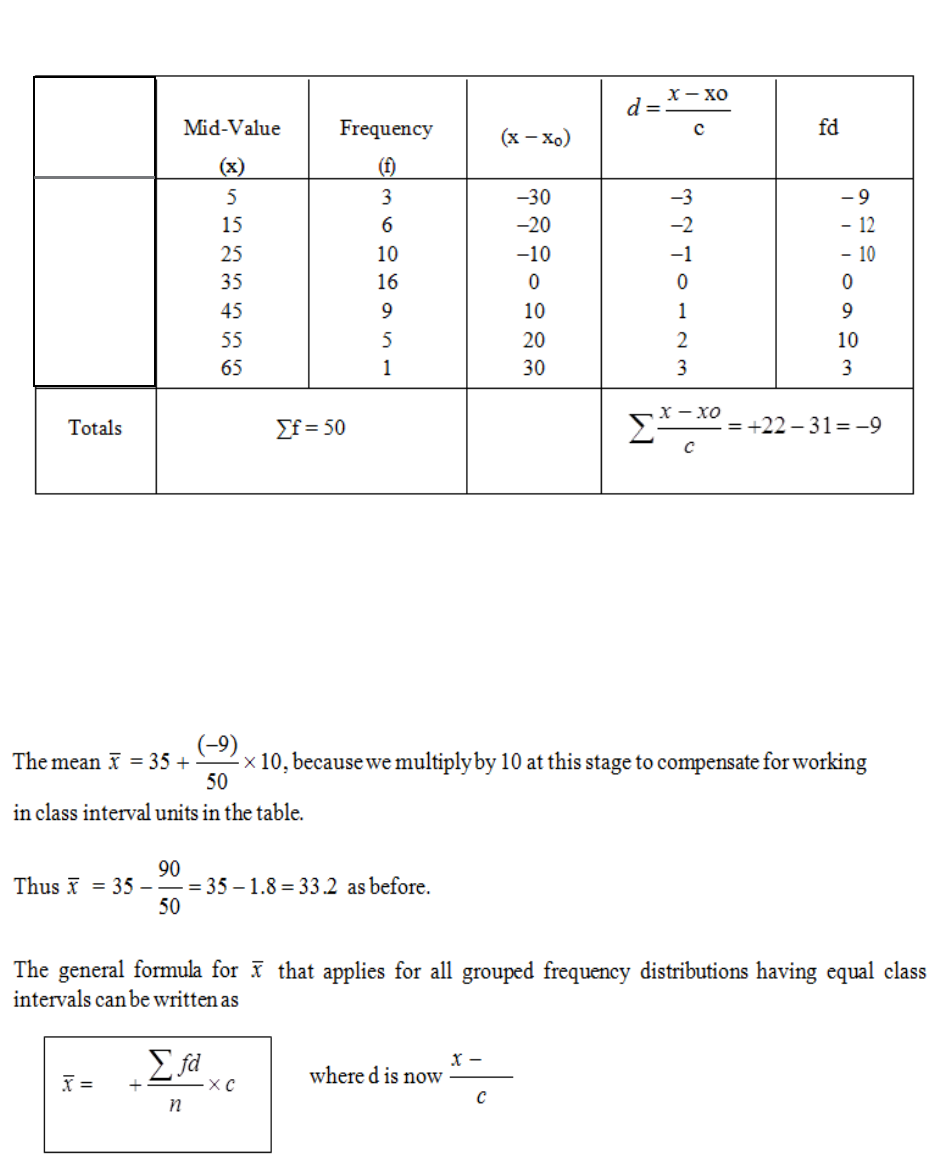

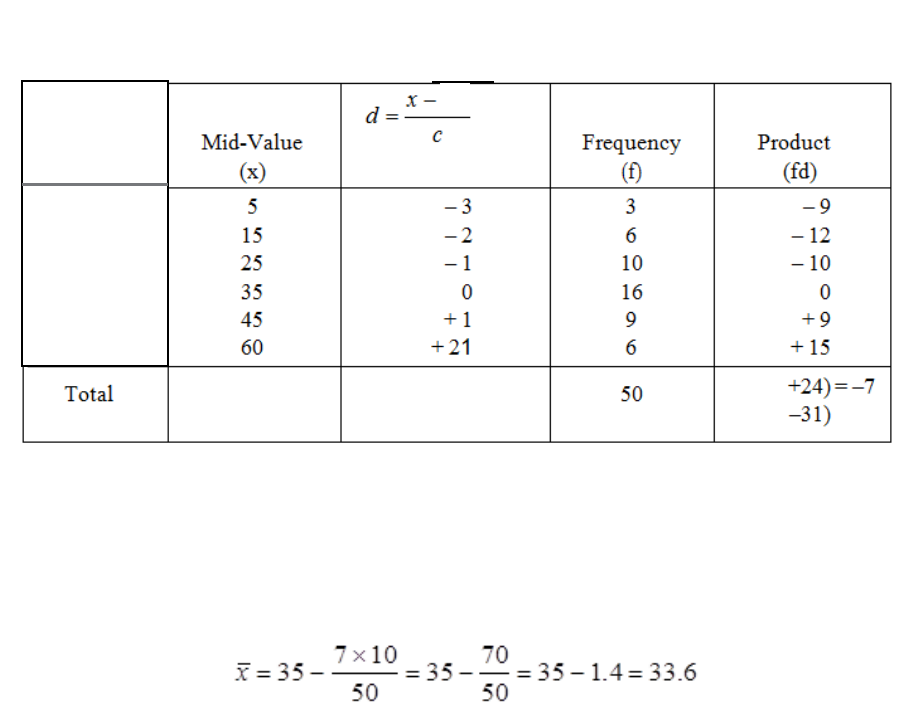

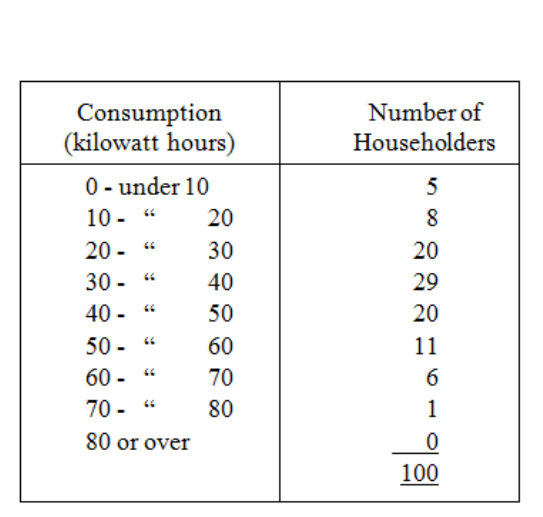

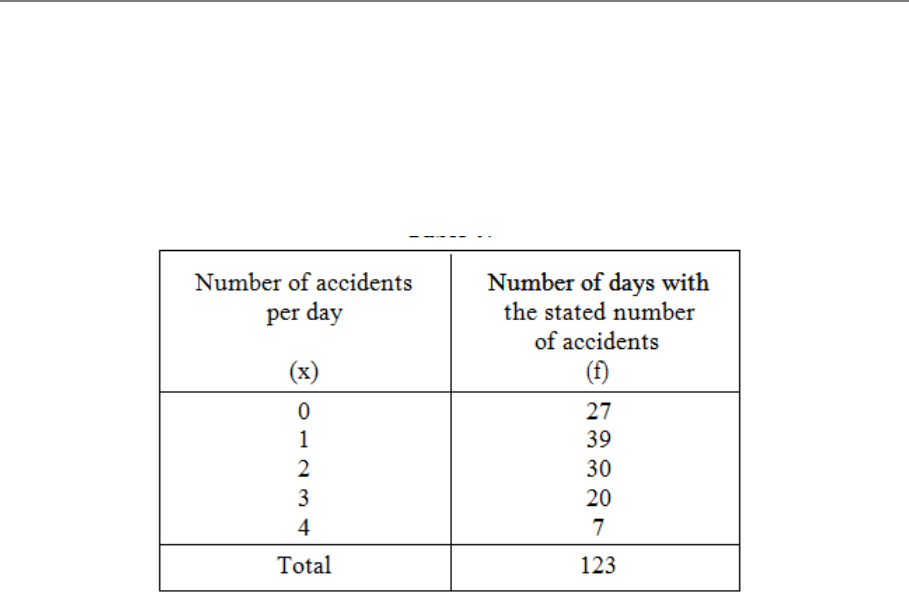

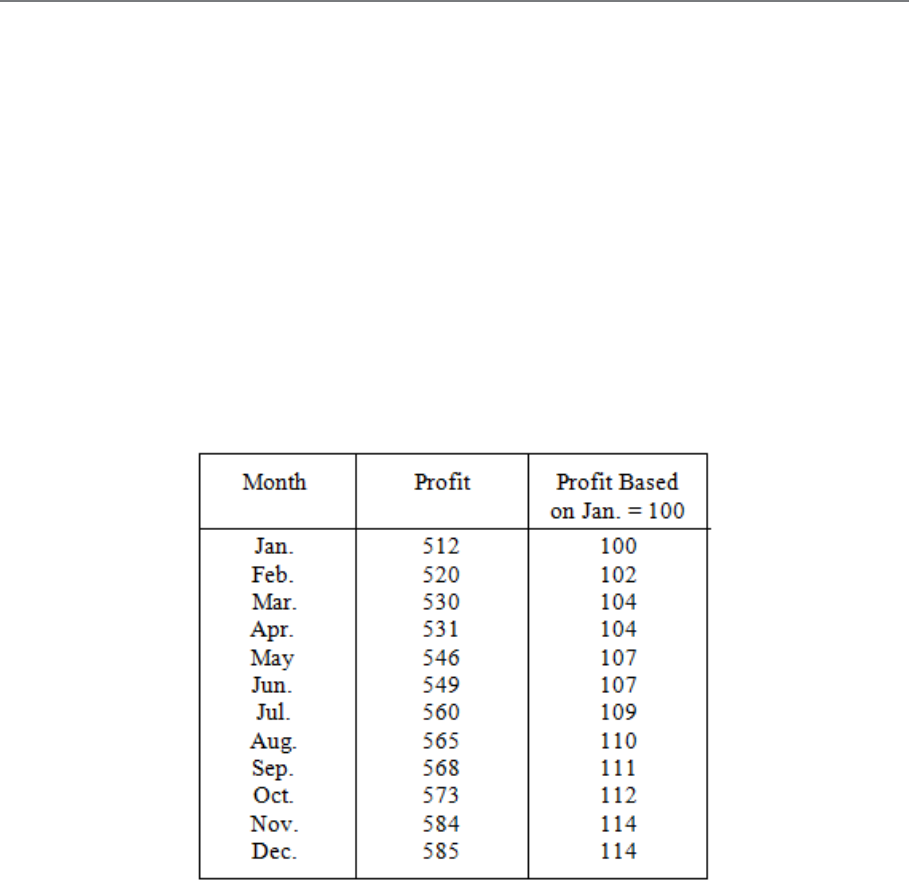

processed is as follows.