0132786796 CISSP All In One Exam Guide 6e

CISSP%20All-in-One%20Exam%20Guide%206e

CISSP%20All-in-One%20Exam%20Guide%206e

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 1472 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover Page

- Title Page

- Copyright Page

- Contents

- Foreword

- Acknowledgments

- Chapter 1 Becoming a CISSP

- Chapter 2 Information Security Governance and Risk Management

- Fundamental Principles of Security

- Security Definitions

- Control Types

- Security Frameworks

- Security Management

- Risk Management

- Risk Assessment and Analysis

- Policies, Standards, Baselines, Guidelines, and Procedures

- Information Classification

- Layers of Responsibility

- Security Steering Committee

- Audit Committee

- Data Owner

- Data Custodian

- System Owner

- Security Administrator

- Security Analyst

- Application Owner

- Supervisor

- Change Control Analyst

- Data Analyst

- Process Owner

- Solution Provider

- User

- Product Line Manager

- Auditor

- Why So Many Roles?

- Personnel Security

- Hiring Practices

- Termination

- Security-Awareness Training

- Degree or Certification?

- Security Governance

- Summary

- Quick Tips

- Chapter 3 Access Control

- Access Controls Overview

- Security Principles

- Identification, Authentication, Authorization, and Accountability

- Access Control Models

- Access Control Techniques and Technologies

- Access Control Administration

- Access Control Methods

- Accountability

- Access Control Practices

- Access Control Monitoring

- Threats to Access Control

- Summary

- Quick Tips

- Chapter 4 Security Architecture and Design

- Computer Security

- System Architecture

- Computer Architecture

- Operating System Architectures

- System Security Architecture

- Security Models

- Security Modes of Operation

- Systems Evaluation Methods

- The Orange Book and the Rainbow Series

- Information Technology Security Evaluation Criteria

- Common Criteria

- Certification vs. Accreditation

- Open vs. Closed Systems

- A Few Threats to Review

- Summary

- Quick Tips

- Chapter 5 Physical and Environmental Security

- Chapter 6 Telecommunications and Network Security

- Chapter 7 Cryptography

- The History of Cryptography

- Cryptography Definitions and Concepts

- Types of Ciphers

- Methods of Encryption

- Types of Symmetric Systems

- Types of Asymmetric Systems

- Message Integrity

- Public Key Infrastructure

- Key Management

- Trusted Platform Module

- Link Encryption vs. End-to-End Encryption

- E-mail Standards

- Internet Security

- Attacks

- Summary

- Quick Tips

- Chapter 8 Business Continuity and Disaster Recovery Planning

- Chapter 9 Legal, Regulations, Investigations, and Compliance

- The Many Facets of Cyberlaw

- The Crux of Computer Crime Laws

- Complexities in Cybercrime

- Intellectual Property Laws

- Privacy

- Liability and Its Ramifications

- Compliance

- Investigations

- Incident Management

- Incident Response Procedures

- Computer Forensics and Proper Collection of Evidence

- International Organization on Computer Evidence

- Motive, Opportunity, and Means

- Computer Criminal Behavior

- Incident Investigators

- The Forensics Investigation Process

- What Is Admissible in Court?

- Surveillance, Search, and Seizure

- Interviewing and Interrogating

- A Few Different Attack Types

- Cybersquatting

- Ethics

- Summary

- Quick Tips

- Chapter 10 Software Development Security

- Software’s Importance

- Where Do We Place Security?

- System Development Life Cycle

- Software Development Life Cycle

- Secure Software Development Best Practices

- Software Development Models

- Capability Maturity Model Integration

- Change Control

- Programming Languages and Concepts

- Distributed Computing

- Mobile Code

- Web Security

- Database Management

- Expert Systems/Knowledge-Based Systems

- Artificial Neural Networks

- Malicious Software (Malware)

- Summary

- Quick Tips

- Chapter 11 Security Operations

- Appendix A: Comprehensive Questions

- Appendix B: About the Download

- Glossary

- Index

ALL IN ONE

CISSP®

EXAM GUIDE

Sixth Edition

Shon Harris

New York • Chicago • San Francisco • Lisbon

London • Madrid • Mexico City • Milan • New Delhi

San Juan • Seoul • Singapore • Sydney • Toronto

McGraw-Hill is an independent entity from (ISC)2® and is not affiliated with (ISC)2 in any manner. This study/training guide and/or

material is not sponsored by, endorsed by, or affiliated with (ISC)2 in any manner. This publication and digital content may be used

in assisting students to prepare for the CISSP exam. Neither (ISC)2 nor McGraw-Hill warrant that use of this publication and digital

content will ensure passing any exam. (ISC)2®, CISSP®, CA P®, ISSAP®, ISSEP® ISSMP®, SSCP® and CBK are trademarks or registered

trademarks of (ISC)2

in the United States and certain other countries. All other trademarks are trademarks of their respective owners.

®

Copyright © 2013 by McGraw-Hill Companies. All rights reserved. Except as permitted under the United States Copyright Act of

1976, no part of this publication may be reproduced or distributed in any form or by any means, or stored in a database or retrieval

system, without the prior written permission of the publisher, with the exception that the program listings may be entered, stored,

and executed in a computer system, but they may not be reproduced for publication.

ISBN: 978-0-07-178173-2

MHID: 0-07-178173-0

The material in this eBook also appears in the print version of this title: ISBN: 978-0-07-178174-9,

MHID: 0-07-178174-9.

All trademarks are trademarks of their respective owners. Rather than put a trademark symbol after every occurrence of a

trademarked name, we use names in an editorial fashion only, and to the benefi t of the trademark owner, with no intention of

infringement of the trademark. Where such designations appear in this book, they have been printed with initial caps.

McGraw-Hill eBooks are available at special quantity discounts to use as premiums and sales promotions, or for use in corporate

training programs. To contact a representative please e-mail us at bulksales@mcgraw-hill.com.

Information has been obtained by McGraw-Hill from sources believed to be reliable. However, because of the possibility of

human or mechanical error by our sources, McGraw-Hill, or others, McGraw-Hill does not guarantee the accuracy, adequacy, or

completeness of any information and is not responsible for any errors or omissions or the results obtained from the use of such

information.

TERMS OF USE

This is a copyrighted work and The McGraw-Hill Companies, Inc. (“McGraw-Hill”) and its licensors reserve all rights in and to

the work. Use of this work is subject to these terms. Except as permitted under the Copyright Act of 1976 and the right to store and

retrieve one copy of the work, you may not decompile, disassemble, reverse engineer, reproduce, modify, create derivative works

based upon, transmit, distribute, disseminate, sell, publish or sublicense the work or any part of it without McGraw-Hill’s prior

consent. You may use the work for your own noncommercial and personal use; any other use of the work is strictly prohibited. Your

right to use the work may be terminated if you fail to comply with these terms.

THE WORK IS PROVIDED “AS IS.” McGRAW-HILL AND ITS LICENSORS MAKE NO GUARANTEES OR

WARRANTIES AS TO THE ACCURACY, ADEQUACY OR COMPLETENESS OF OR RESULTS TO BE OBTAINED FROM

USING THE WORK, INCLUDING ANY INFORMATION THAT CAN BE ACCESSED THROUGH THE WORK VIA HYPER-

LINK OR OTHERWISE, AND EXPRESSLY DISCLAIM ANY WARRANTY, EXPRESS OR IMPLIED, INCLUDING BUT NOT

LIMITED TO IMPLIED WARRANTIES OF MERCHANTABILITY OR FITNESS FOR A PARTICULAR PURPOSE.

McGraw-Hill and its licensors do not warrant or guarantee that the functions contained in the work will meet your requirements

or that its operation will be uninterrupted or error free. Neither McGraw-Hill nor its licensors shall be liable to you or anyone else

for any inaccuracy, error or omission, regardless of cause, in the work or for any damages resulting therefrom. McGraw-Hill has

no responsibility for the content of any information accessed through the work. Under no circumstances shall McGraw-Hill and/or

its licensors be liable for any indirect, incidental, special, punitive, consequential or similar damages that result from the use of or

inability to use the work, even if any of them has been advised of the possibility of such damages. This limitation of liability shall

apply to any claim or cause whatsoever whether such claim or cause arises in contract, tort or otherwise.

I dedicate this book to some of the most wonderful people

I have lost over the last several years.

My grandfather (George Fairbairn), who taught me about

integrity, unconditional love, and humility.

My grandmother (Marge Fairbairn), who taught me about the importance

of living life to the fullest, having “fun fun,” and of course, black jack.

My dad (Tom Conlon), who taught me how to be strong and face adversity.

My father-in-law (Maynard Harris), who taught me

a deep meaning of the importance of family that I never knew before.

Each person was a true role model to me. I learned a lot from them,

I appreciate all that they have done for me, and I miss them terribly.

ABOUT THE AUTHOR

Shon Harris is the founder and CEO of Shon Harris Security LLC and Logical Security

LLC, a security consultant, a former engineer in the Air Force’s Information Warfare

unit, an instructor, and an author. Shon has owned and run her own training and con-

sulting companies since 2001. She consults with Fortune 100 corporations and govern-

ment agencies on extensive security issues. She has authored three best-selling CISSP

books, was a contributing author to Gray Hat Hacking: The Ethical Hacker’s Handbook

and Security Information and Event Management (SIEM) Implementation, and a technical

editor for Information Security Magazine. Shon has also developed many digital security

products for Pearson Publishing.

About the Technical Editor

Polisetty Veera Subrahmanya Kumar, CISSP, CISA, PMP, PMI-RMP, MCPM, ITIL, has

more than two decades of experience in the field of Information Technology. His areas

of specialization include information security, business continuity, project manage-

ment, and risk management. In the recent past he served his term as Chairperson for

Project Management Institute’s PMI-RMP (PMI - Risk Management Professional) Cre-

dentialing Committee and was a member of ISACA’s India Growth Task Force team. In

the past he worked as content development team leader on a variety of PMI standards

development projects. He was a lead instructor for the PMI PMBOK review seminars.

CONTENTS AT A GLANCE

Chapter 1 Becoming a CISSP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1

Chapter 2 Information Security Governance and Risk Management . . . . . . . . 21

Chapter 3 Access Control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 157

Chapter 4 Security Architecture and Design . . . . . . . . . . . . . . . . . . . . . . . . . . 297

Chapter 5 Physical and Environmental Security . . . . . . . . . . . . . . . . . . . . . . . . 427

Chapter 6 Telecommunications and Network Security . . . . . . . . . . . . . . . . . . 515

Chapter 7 Cryptography . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 759

Chapter 8 Business Continuity and Disaster Recovery . . . . . . . . . . . . . . . . . . 885

Chapter 9 Legal, Regulations, Compliance, and Investigations . . . . . . . . . . . . . 979

Chapter 10 Software Development Security . . . . . . . . . . . . . . . . . . . . . . . . . . . 1081

Chapter 11 Security Operations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1233

Appendix A Comprehensive Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1319

Appendix B About the Download . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1379

Index . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1385

v

Glossary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . G-1

This page intentionally left blank

CONTENTS

Foreword . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . xx

Acknowledgments . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . xxiii

Chapter 1 Becoming a CISSP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1

Why Become a CISSP? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1

The CISSP Exam . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2

CISSP: A Brief History . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

How Do You Sign Up for the Exam? . . . . . . . . . . . . . . . . . . . . . . . . 7

What Does This Book Cover? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

Tips for Taking the CISSP Exam . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8

How to Use This Book . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

Chapter 2 Information Security Governance and Risk Management . . . . . . . . 21

Fundamental Principles of Security . . . . . . . . . . . . . . . . . . . . . . . . . 22

Availability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

Integrity . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

Confidentiality . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

Balanced Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

Security Definitions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

Control Types . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 28

Security Frameworks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 34

ISO/IEC 27000 Series . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 36

Enterprise Architecture Development . . . . . . . . . . . . . . . . . . . 41

Security Controls Development . . . . . . . . . . . . . . . . . . . . . . . 55

COSO . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 59

Process Management Development . . . . . . . . . . . . . . . . . . . . 60

Functionality vs. Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

Security Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 69

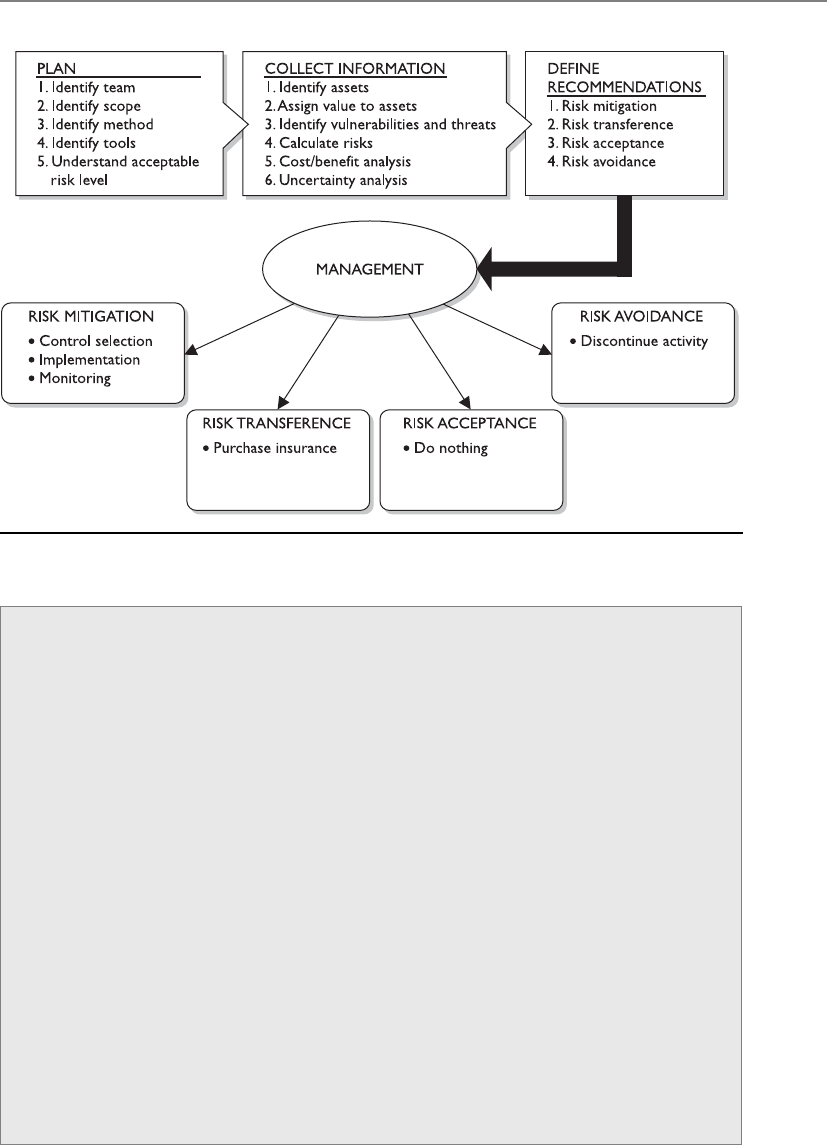

Risk Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 70

Who Really Understands Risk Management? . . . . . . . . . . . . . 71

Information Risk Management Policy . . . . . . . . . . . . . . . . . . 72

The Risk Management Team . . . . . . . . . . . . . . . . . . . . . . . . . . 73

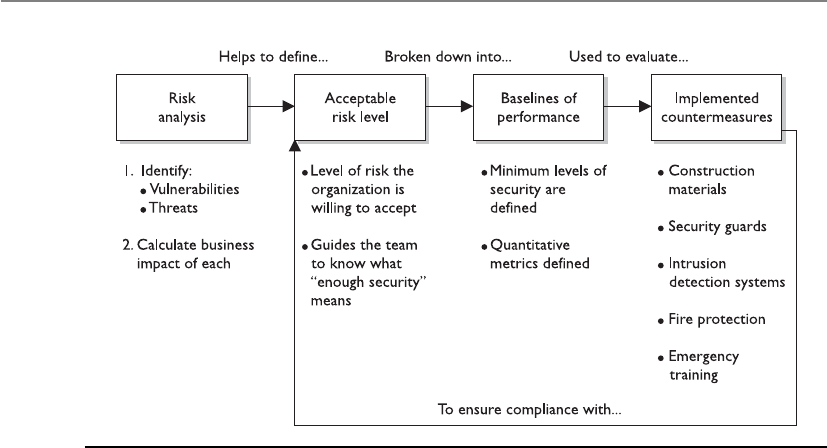

Risk Assessment and Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 74

Risk Analysis Team . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

The Value of Information and Assets . . . . . . . . . . . . . . . . . . . 76

Costs That Make Up the Value . . . . . . . . . . . . . . . . . . . . . . . . 76

Identifying Vulnerabilities and Threats . . . . . . . . . . . . . . . . . 77

Methodologies for Risk Assessment . . . . . . . . . . . . . . . . . . . . 78

Risk Analysis Approaches . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

vii

CISSP All-in-One Exam Guide

viii

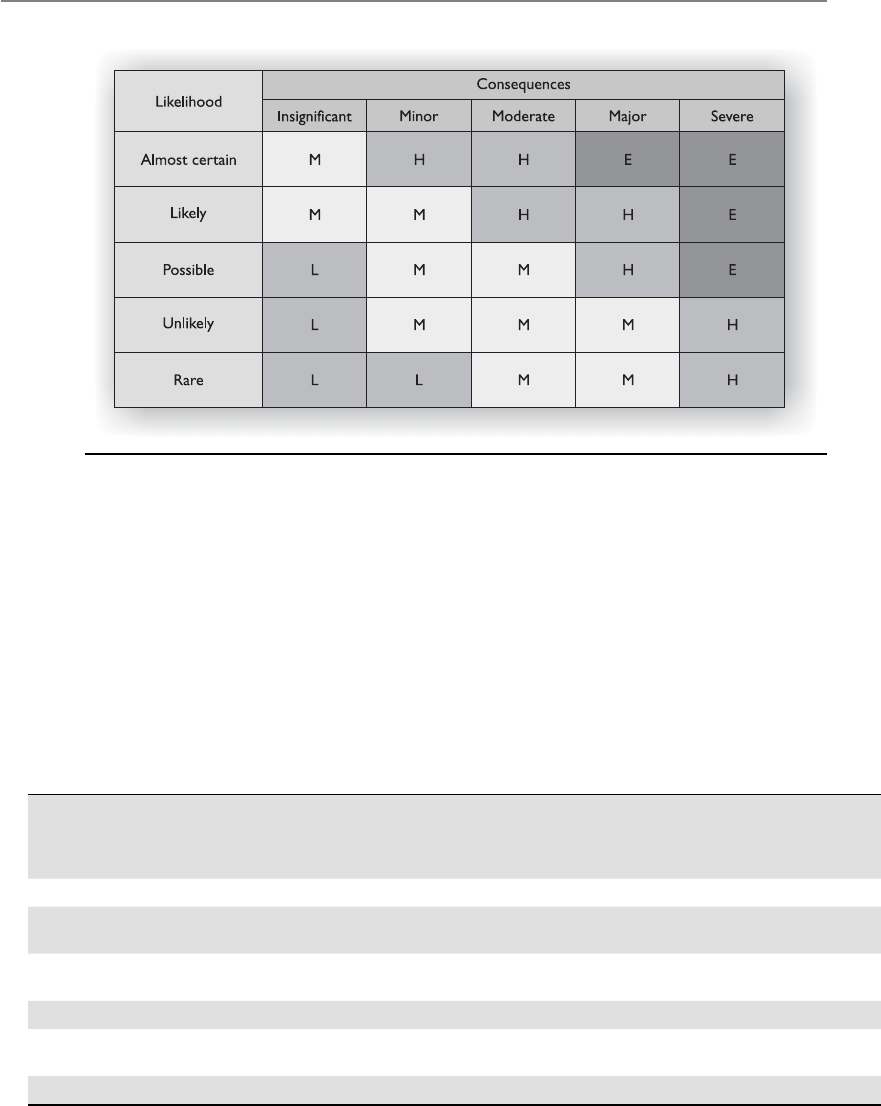

Qualitative Risk Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89

Protection Mechanisms . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 92

Putting It Together . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 96

Total Risk vs. Residual Risk . . . . . . . . . . . . . . . . . . . . . . . . . . . 96

Handling Risk . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 97

Outsourcing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 100

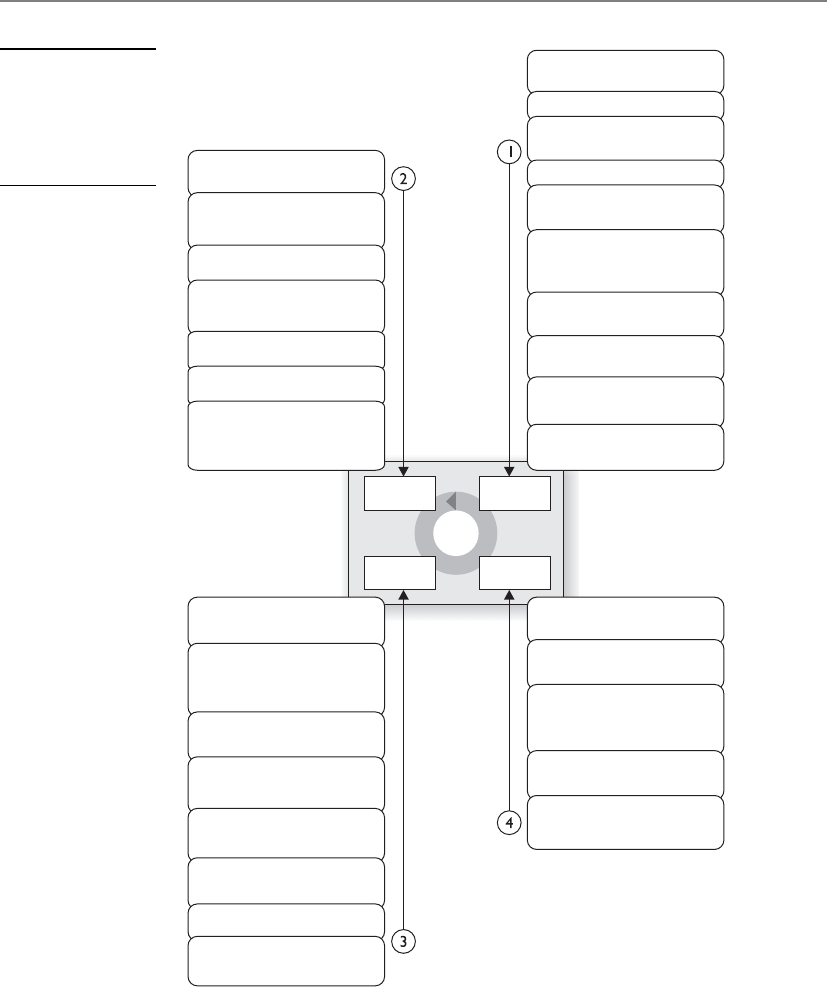

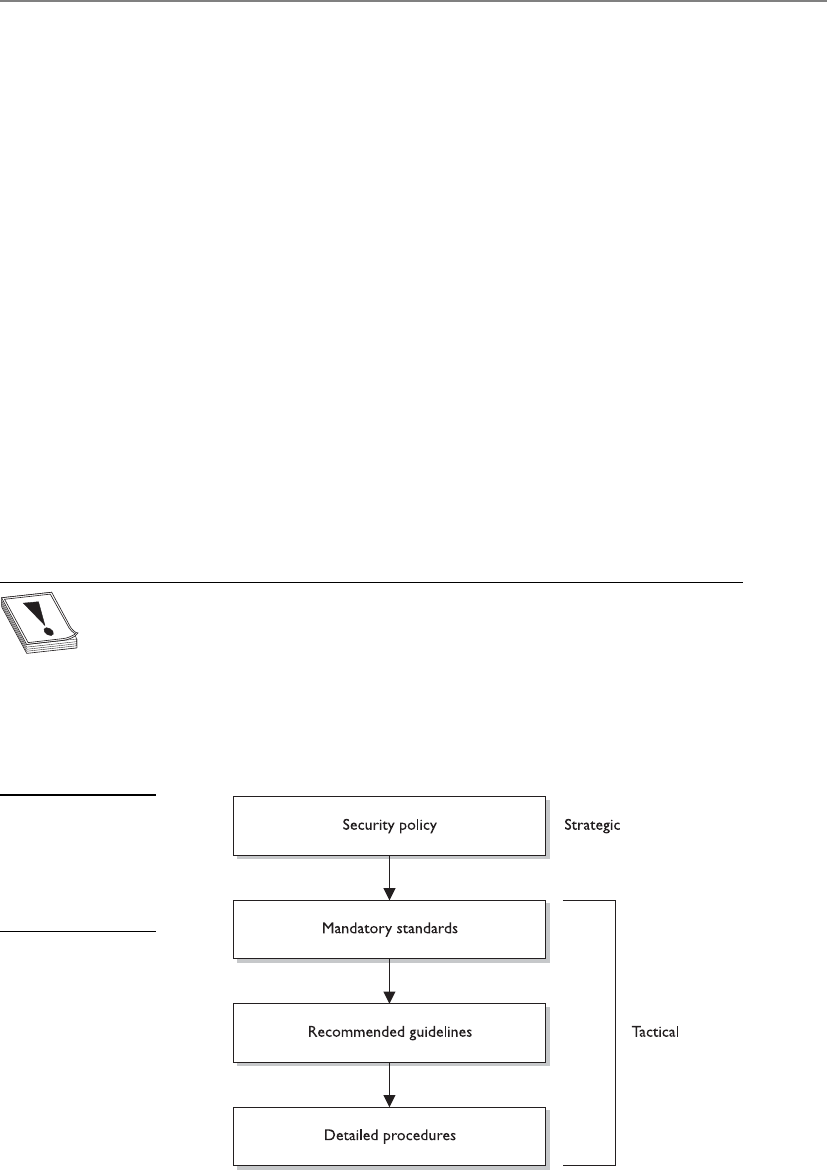

Policies, Standards, Baselines, Guidelines,

and Procedures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 101

Security Policy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 102

Standards . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 105

Baselines . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 106

Guidelines . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 106

Procedures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 107

Implementation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 108

Information Classification . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 109

Classifications Levels . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110

Classification Controls . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11 3

Layers of Responsibility . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11 4

Board of Directors . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11 5

Executive Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11 6

Chief Information Officer . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11 8

Chief Privacy Officer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11 8

Chief Security Officer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11 9

Security Steering Committee . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 120

Audit Committee . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 121

Data Owner . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 121

Data Custodian . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 122

System Owner . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 122

Security Administrator . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 122

Security Analyst . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 123

Application Owner . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 123

Supervisor . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 123

Change Control Analyst . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 124

Data Analyst . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 124

Process Owner . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 124

Solution Provider . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 124

User . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 125

Product Line Manager . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 125

Auditor . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 125

Why So Many Roles? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 126

Personnel Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 126

Hiring Practices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 128

Termination . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 129

Security-Awareness Training . . . . . . . . . . . . . . . . . . . . . . . . . . 130

Degree or Certification? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 131

Security Governance . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 132

Metrics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 132

Contents

ix

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 137

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 138

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 141

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 150

Chapter 3 Access Control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 157

Access Controls Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 157

Security Principles . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 158

Availability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 159

Integrity . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 159

Confidentiality . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 160

Identification, Authentication, Authorization,

and Accountability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 160

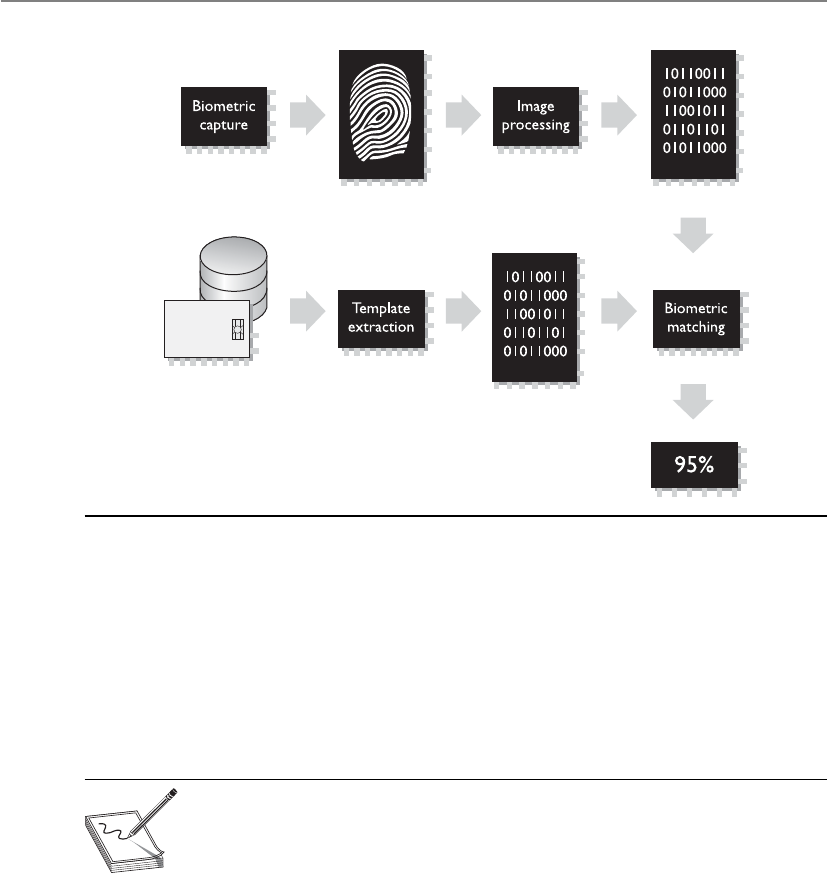

Identification and Authentication . . . . . . . . . . . . . . . . . . . . . 162

Password Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 174

Authorization . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 203

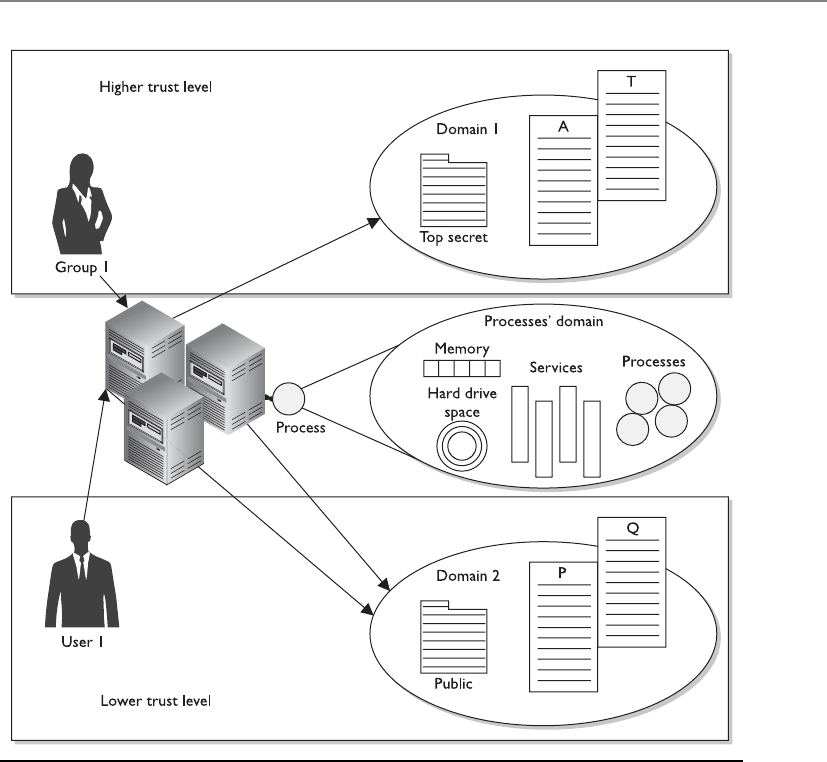

Access Control Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 219

Discretionary Access Control . . . . . . . . . . . . . . . . . . . . . . . . . 220

Mandatory Access Control . . . . . . . . . . . . . . . . . . . . . . . . . . . 221

Role-Based Access Control . . . . . . . . . . . . . . . . . . . . . . . . . . . 224

Access Control Techniques and Technologies . . . . . . . . . . . . . . . . . 227

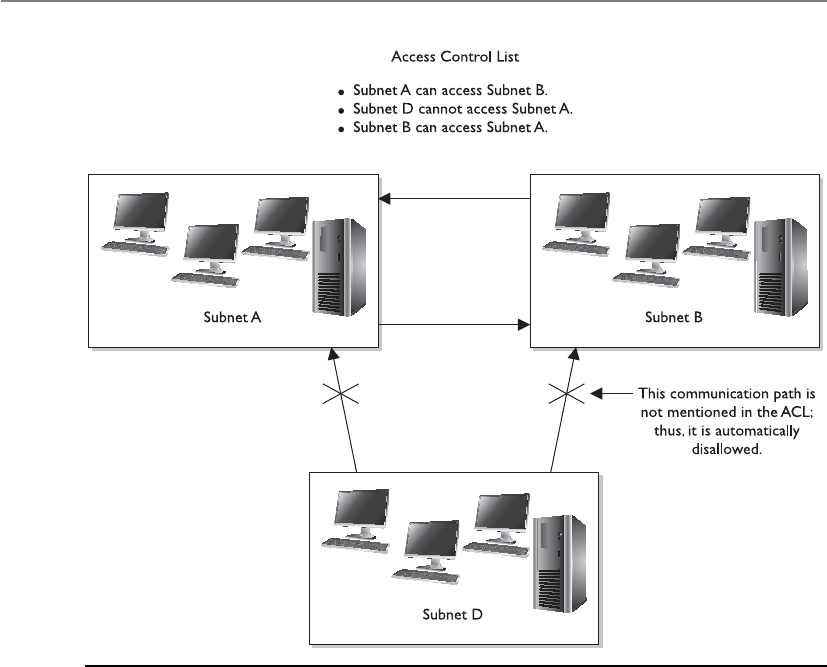

Rule-Based Access Control . . . . . . . . . . . . . . . . . . . . . . . . . . . 227

Constrained User Interfaces . . . . . . . . . . . . . . . . . . . . . . . . . . 228

Access Control Matrix . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 229

Content-Dependent Access Control . . . . . . . . . . . . . . . . . . . . 231

Context-Dependent Access Control . . . . . . . . . . . . . . . . . . . . 231

Access Control Administration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 232

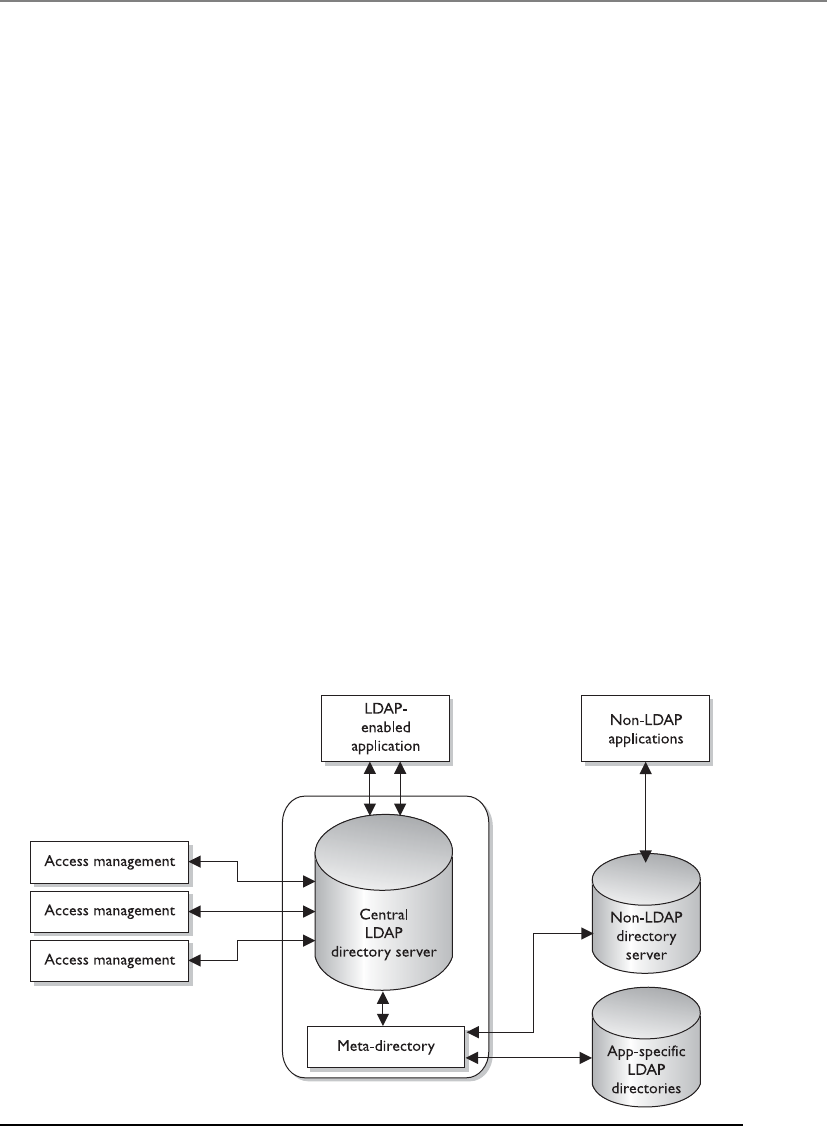

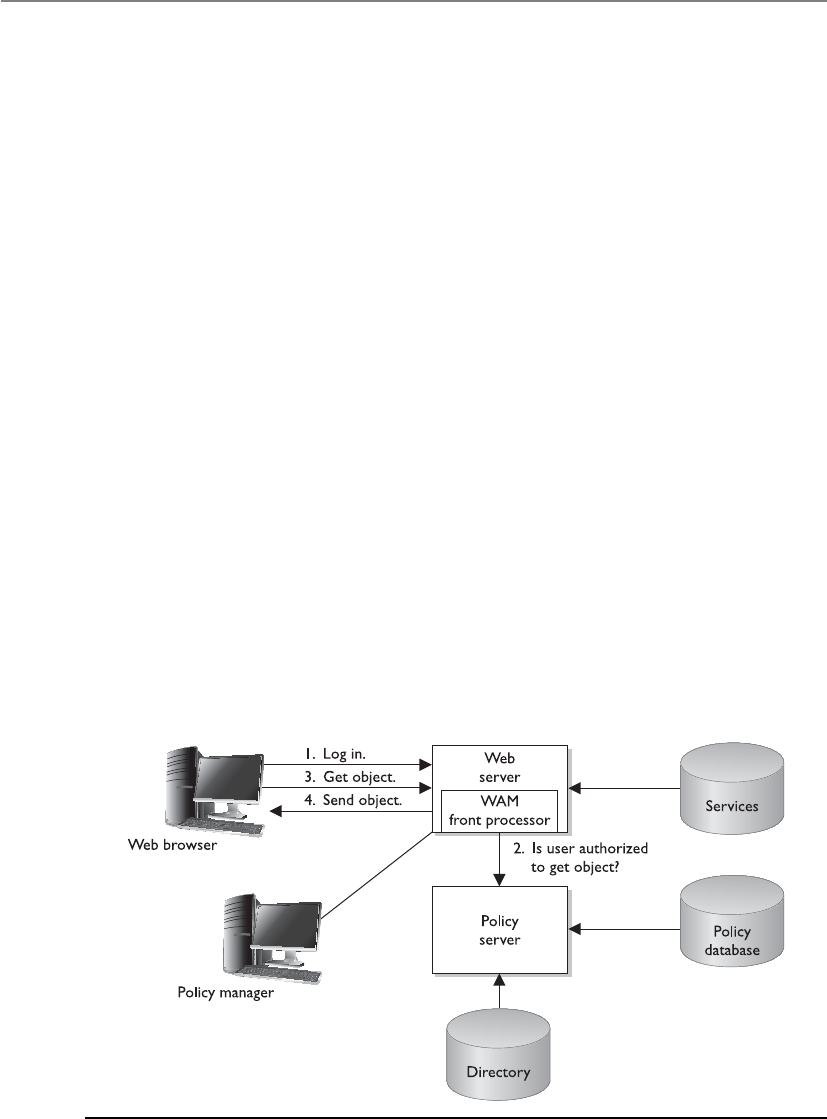

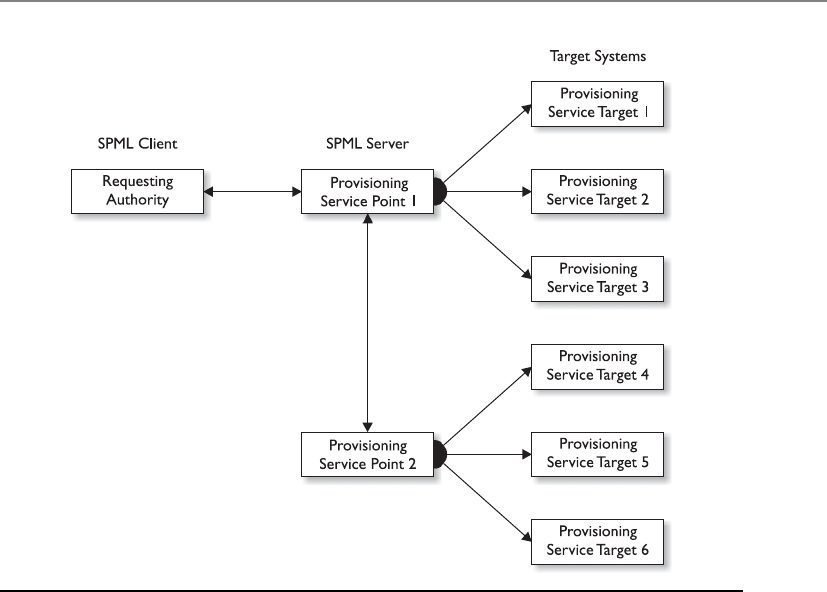

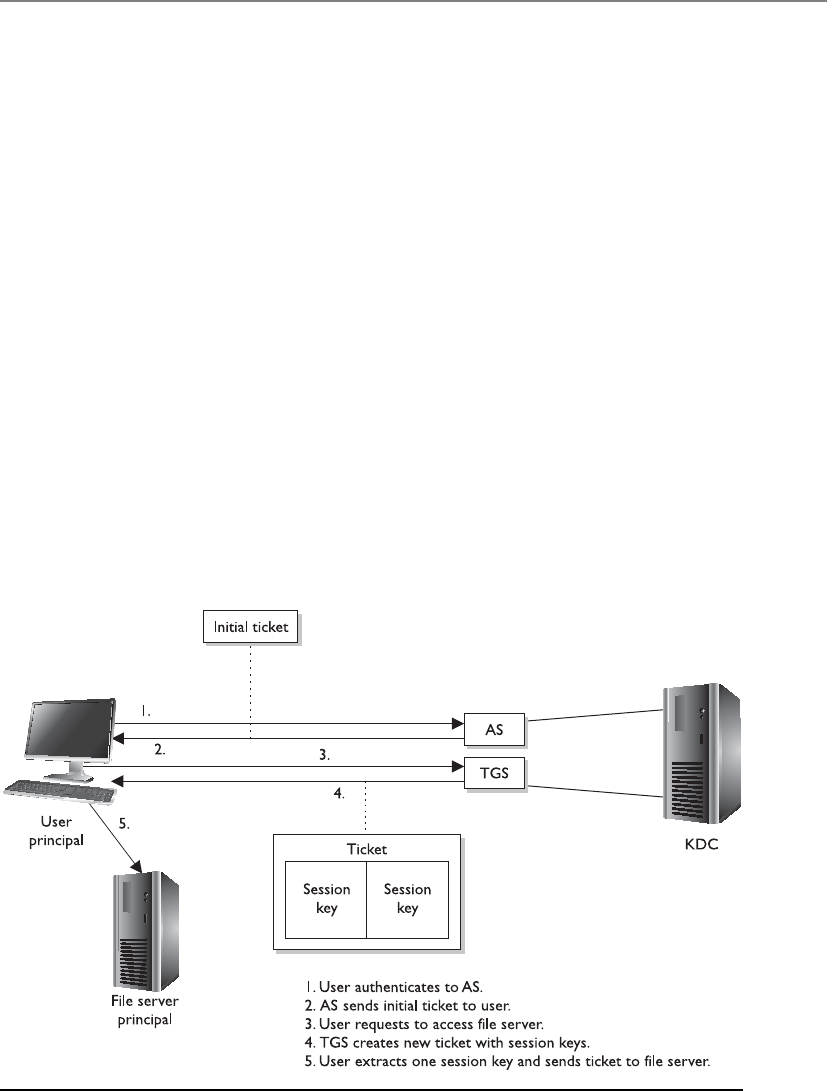

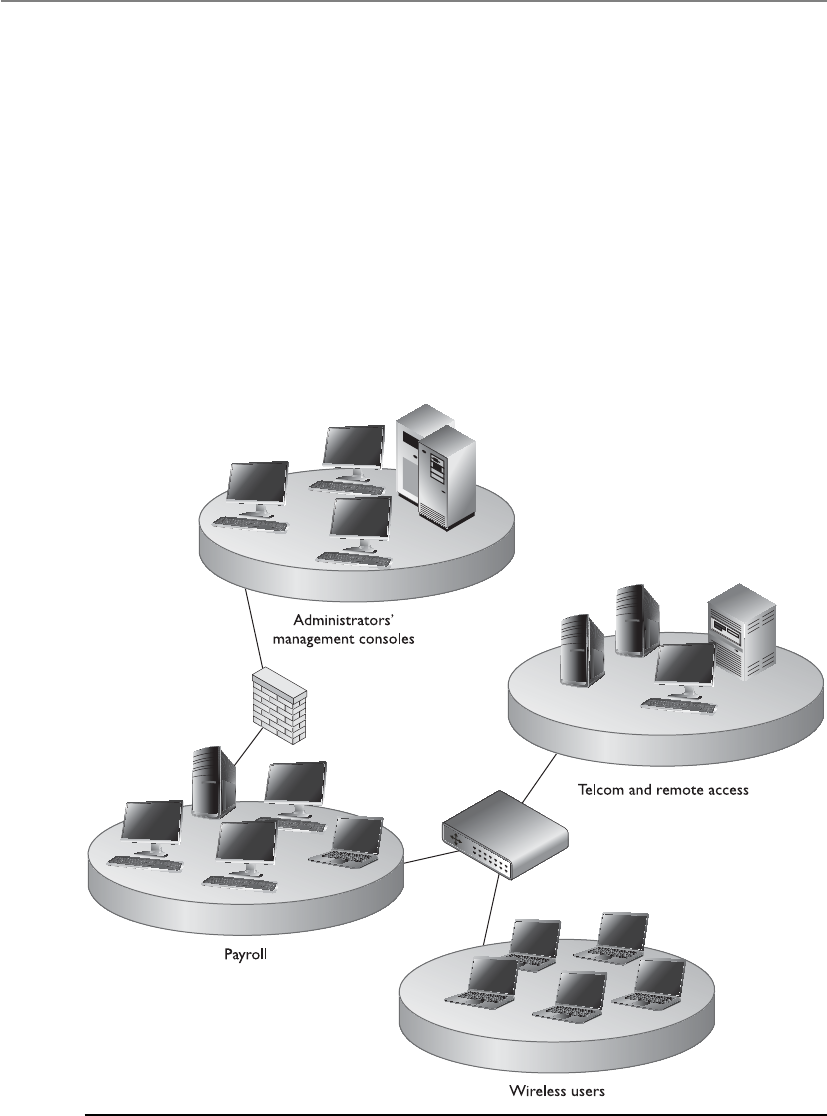

Centralized Access Control Administration . . . . . . . . . . . . . . 233

Decentralized Access Control Administration . . . . . . . . . . . . 240

Access Control Methods . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 241

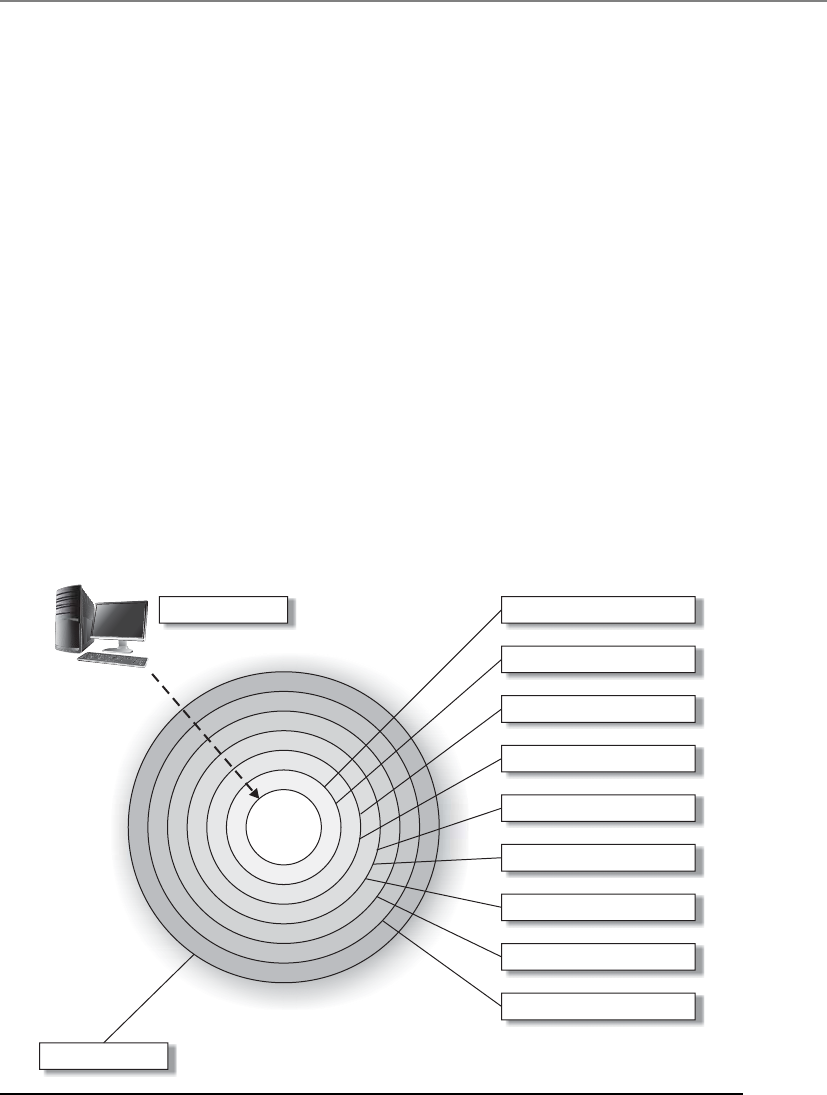

Access Control Layers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 241

Administrative Controls . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 242

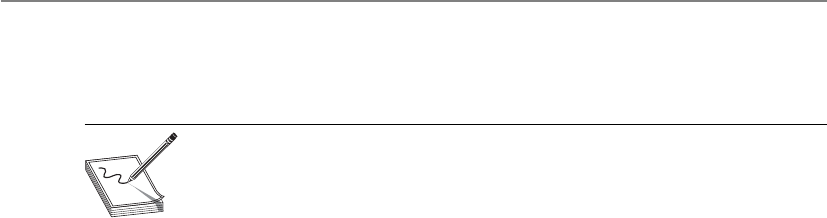

Physical Controls . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 243

Technical Controls . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 245

Accountability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 248

Review of Audit Information . . . . . . . . . . . . . . . . . . . . . . . . . 250

Protecting Audit Data and Log Information . . . . . . . . . . . . . . 251

Keystroke Monitoring . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 251

Access Control Practices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 252

Unauthorized Disclosure of Information . . . . . . . . . . . . . . . . 253

Access Control Monitoring . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 255

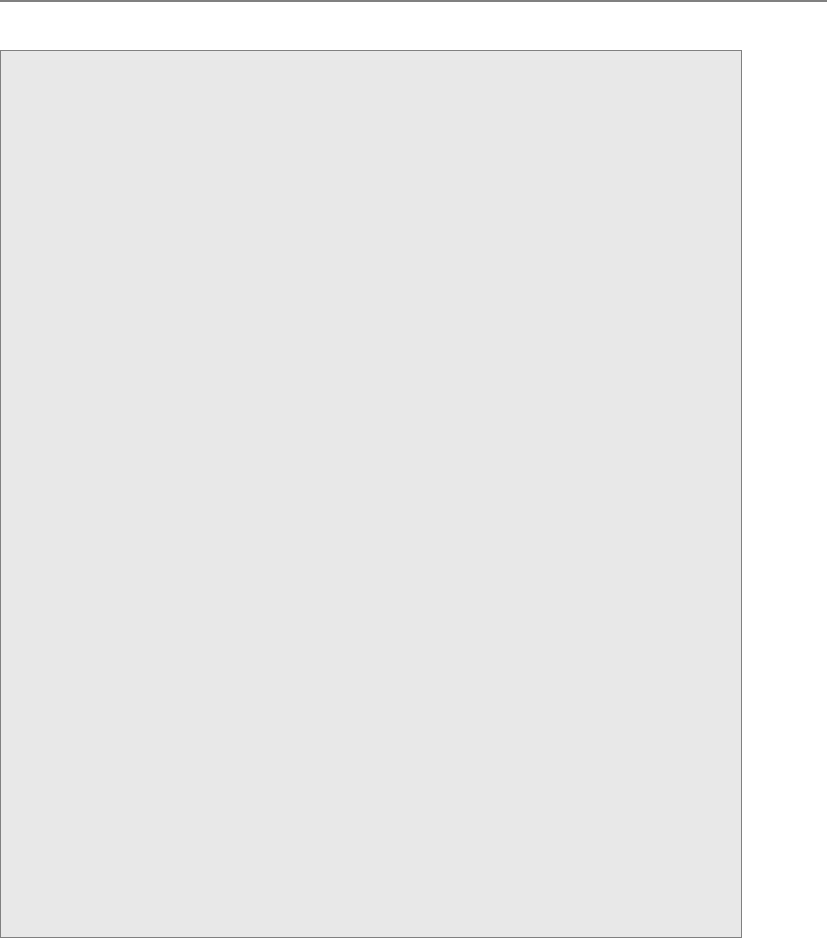

Intrusion Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 255

Intrusion Prevention Systems . . . . . . . . . . . . . . . . . . . . . . . . . 265

Threats to Access Control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 268

Dictionary Attack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 269

Brute Force Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 270

Spoofing at Logon . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 270

CISSP All-in-One Exam Guide

x

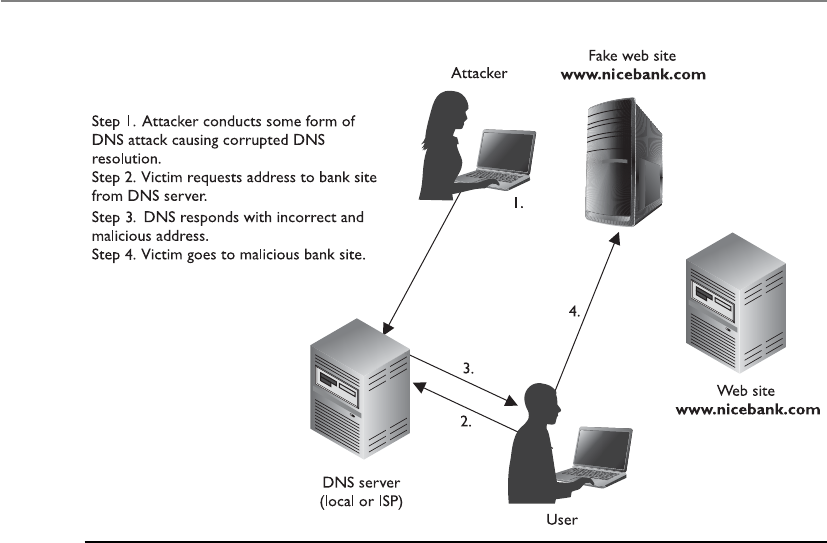

Phishing and Pharming . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 271

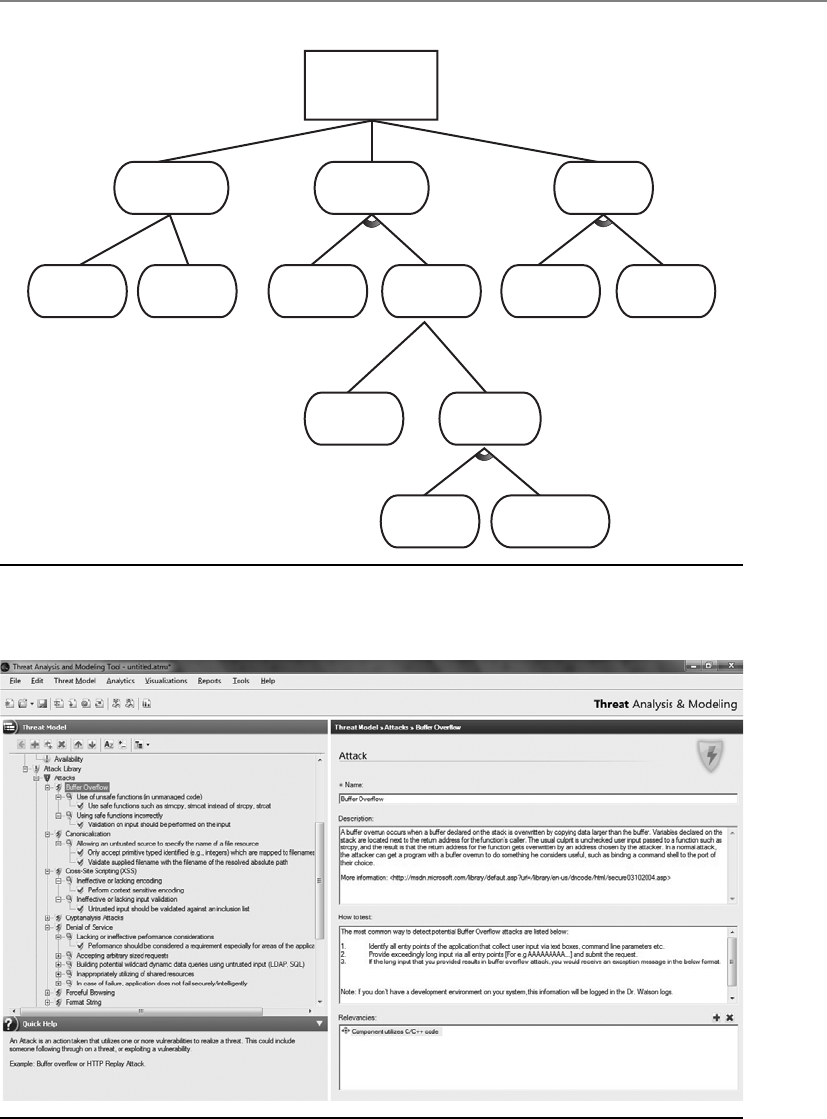

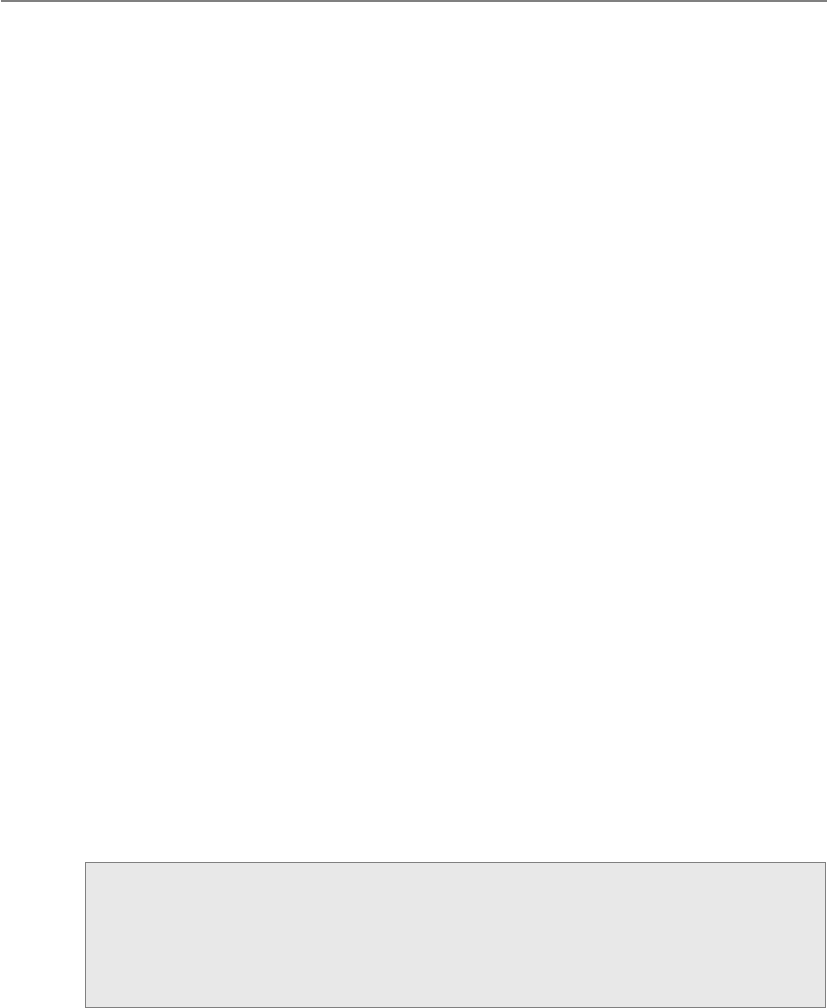

Threat Modeling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 273

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 277

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 277

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 282

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 291

Chapter 4 Security Architecture and Design . . . . . . . . . . . . . . . . . . . . . . . . . . 297

Computer Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 298

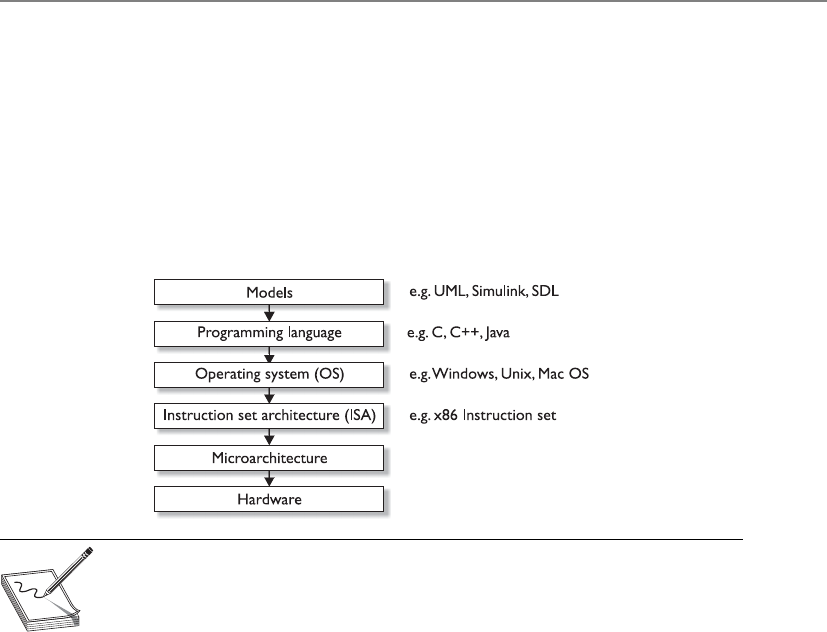

System Architecture . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 300

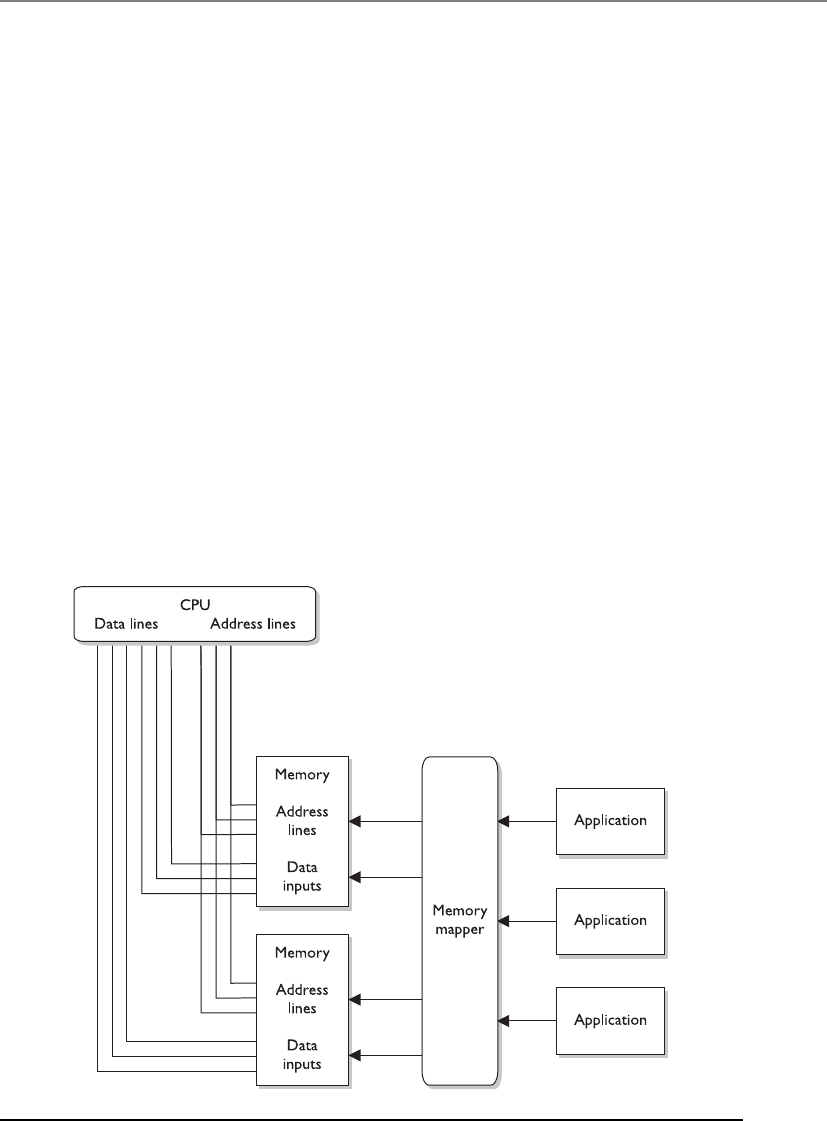

Computer Architecture . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 303

The Central Processing Unit . . . . . . . . . . . . . . . . . . . . . . . . . . 304

Multiprocessing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 309

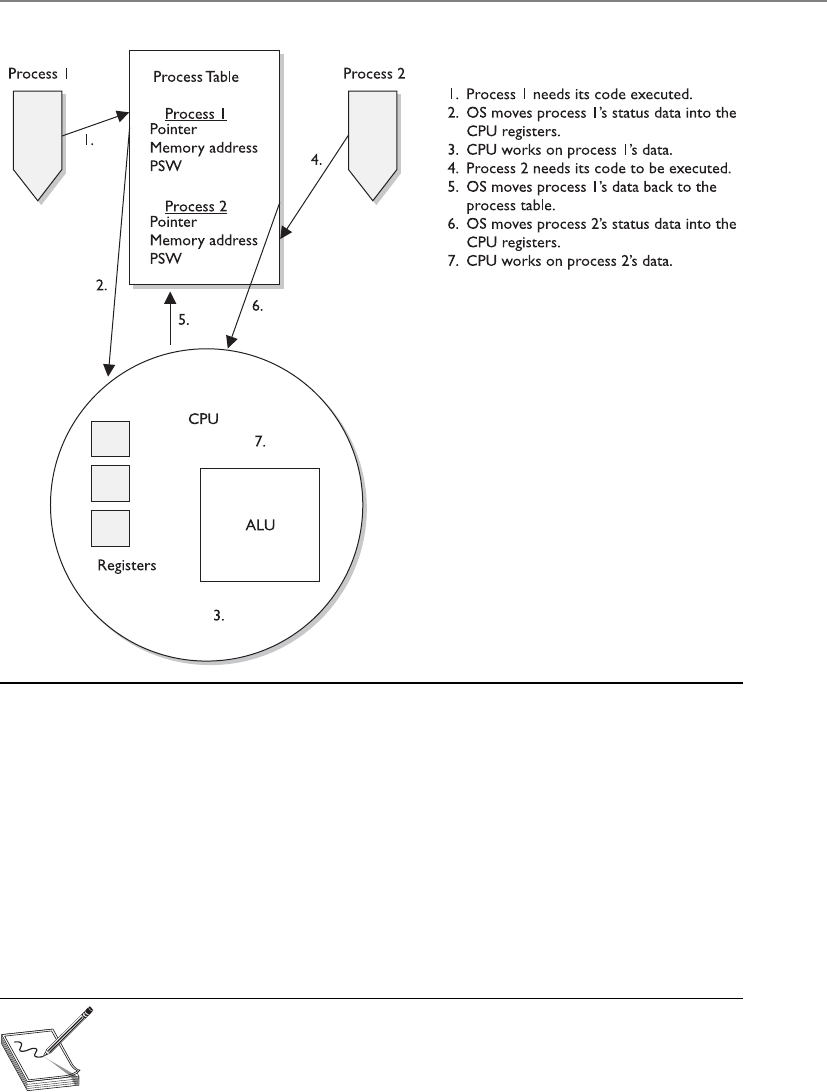

Operating System Components . . . . . . . . . . . . . . . . . . . . . . . 31 2

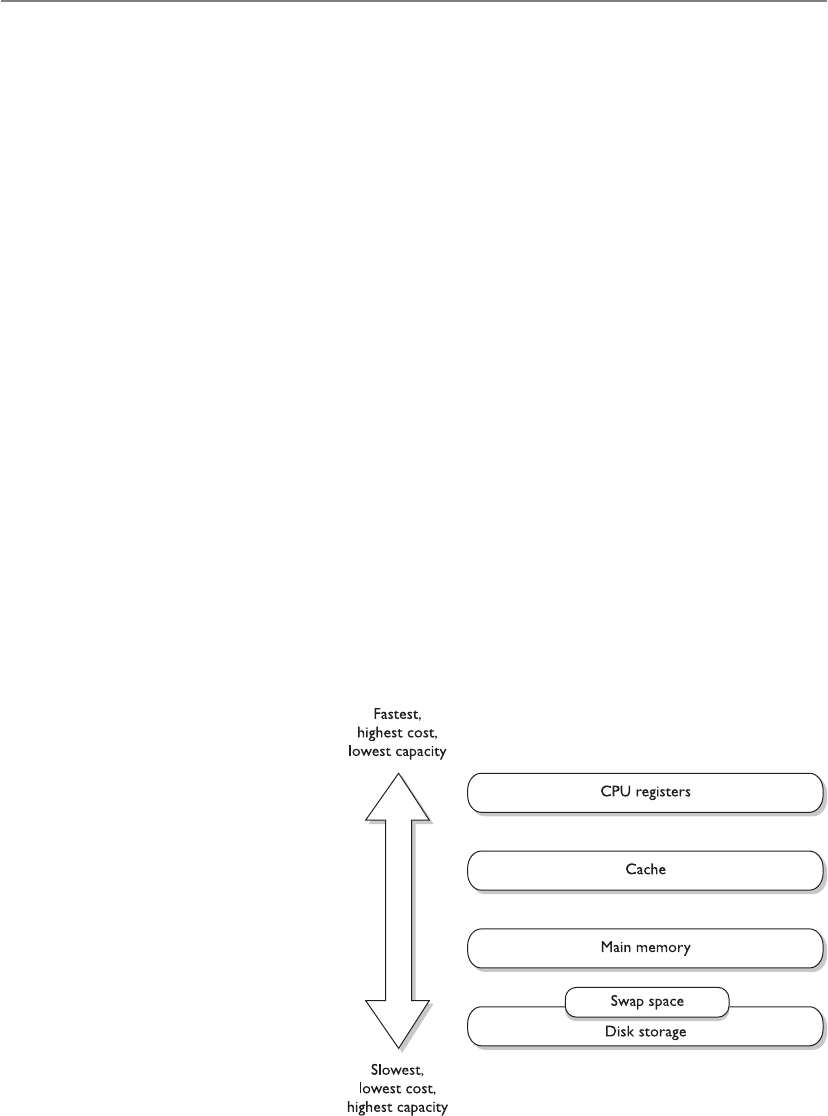

Memory Types . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 325

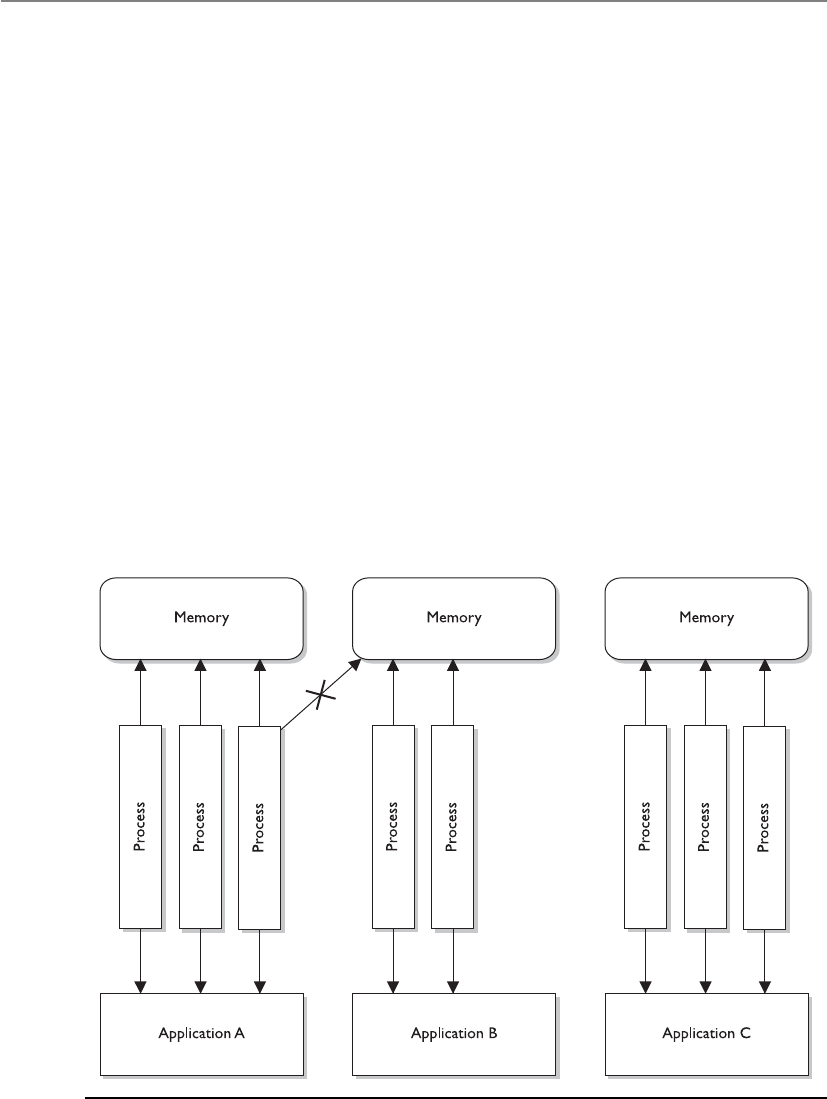

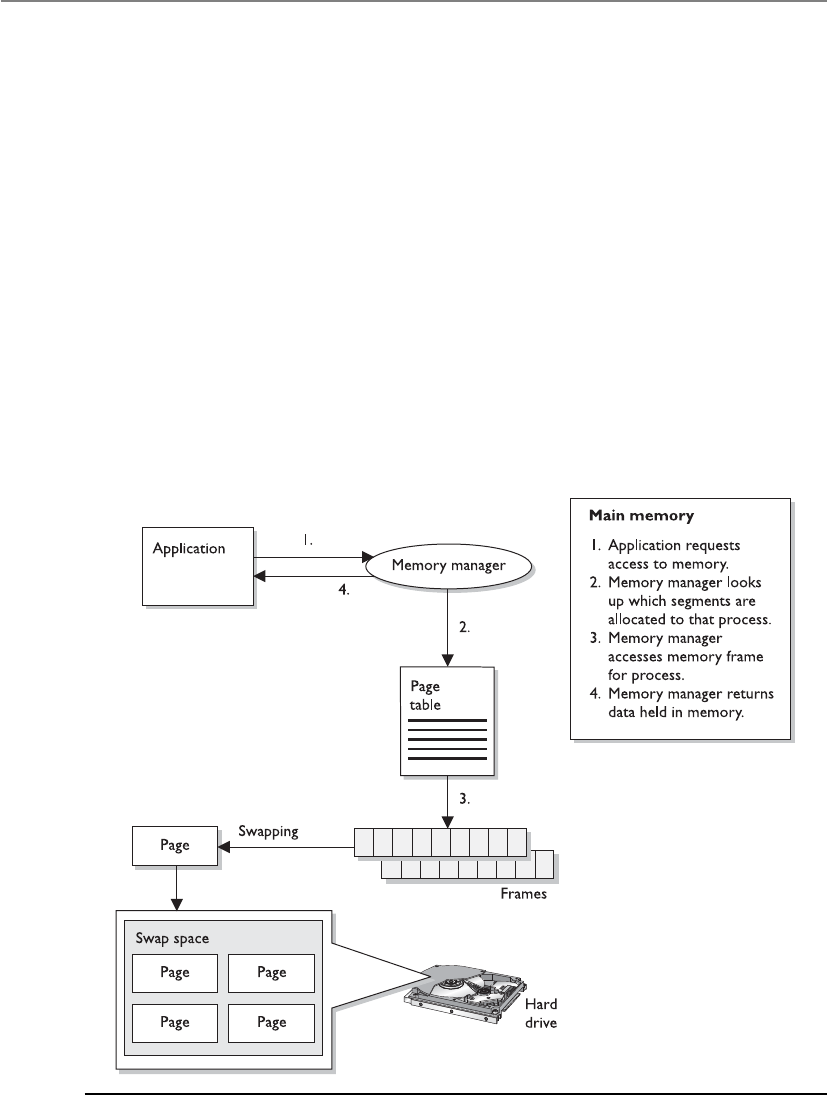

Virtual Memory . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 337

Input/Output Device Management . . . . . . . . . . . . . . . . . . . . . 340

CPU Architecture . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 342

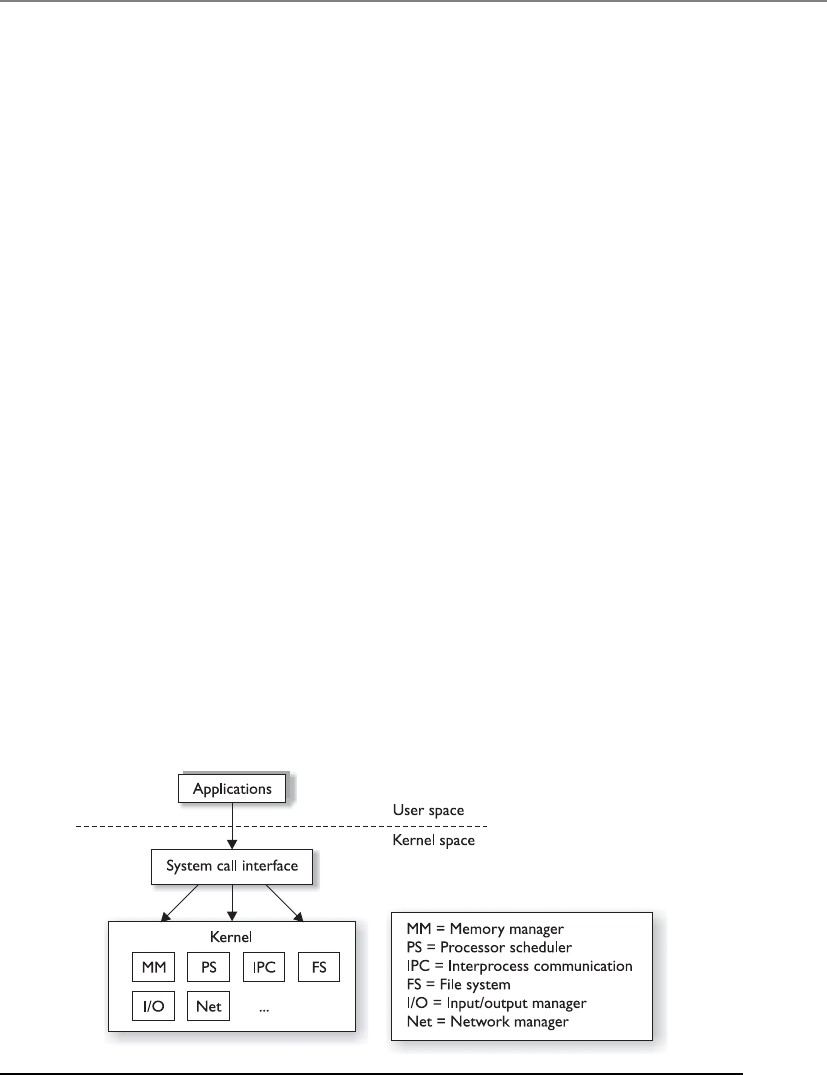

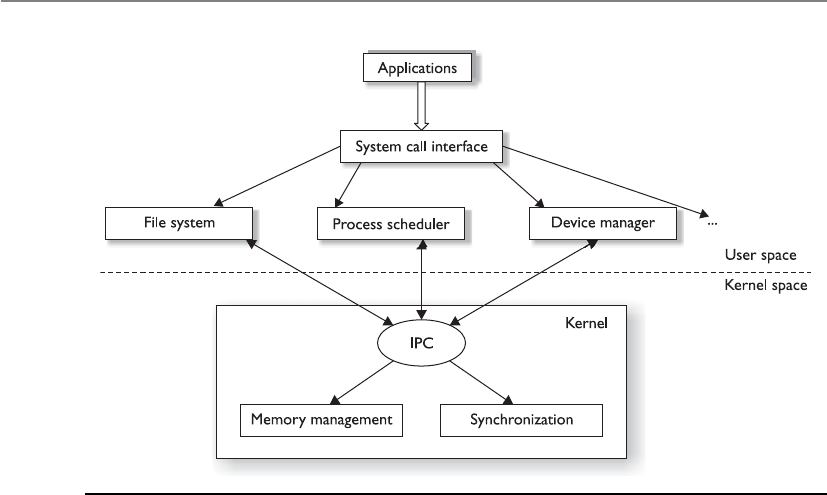

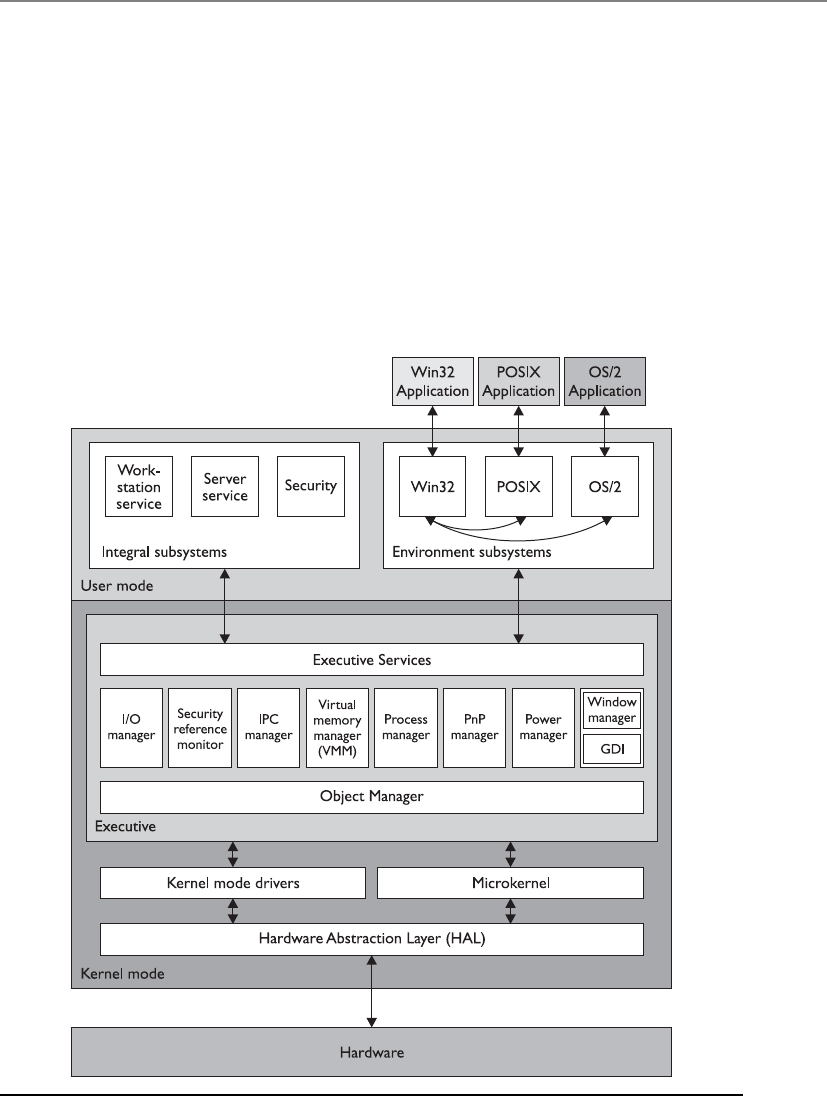

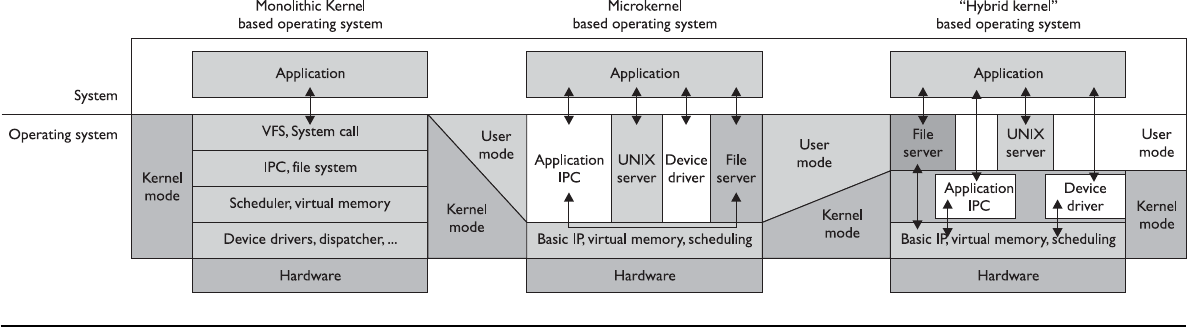

Operating System Architectures . . . . . . . . . . . . . . . . . . . . . . . . . . . . 347

Virtual Machines . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 355

System Security Architecture . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 357

Security Policy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 357

Security Architecture Requirements . . . . . . . . . . . . . . . . . . . . 359

Security Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 365

State Machine Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 367

Bell-LaPadula Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 369

Biba Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 372

Clark-Wilson Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 374

Information Flow Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 377

Noninterference Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 380

Lattice Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 381

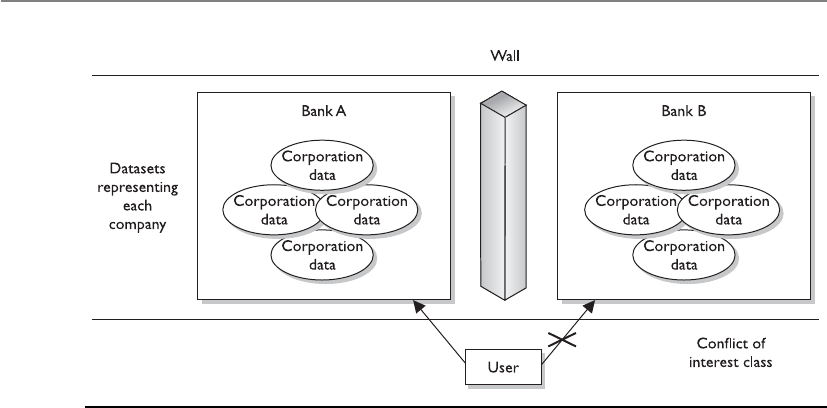

Brewer and Nash Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 383

Graham-Denning Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 384

Harrison-Ruzzo-Ullman Model . . . . . . . . . . . . . . . . . . . . . . . 385

Security Modes of Operation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 386

Dedicated Security Mode . . . . . . . . . . . . . . . . . . . . . . . . . . . . 387

System High-Security Mode . . . . . . . . . . . . . . . . . . . . . . . . . . 387

Compartmented Security Mode . . . . . . . . . . . . . . . . . . . . . . . 387

Multilevel Security Mode . . . . . . . . . . . . . . . . . . . . . . . . . . . . 388

Trust and Assurance . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 390

Systems Evaluation Methods . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 391

Why Put a Product Through Evaluation? . . . . . . . . . . . . . . . . 391

The Orange Book . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 392

The Orange Book and the Rainbow Series . . . . . . . . . . . . . . . . . . . . 397

The Red Book . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 398

Information Technology Security

Evaluation Criteria . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 399

Contents

xi

Common Criteria . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 402

Certification vs. Accreditation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 406

Certification . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 406

Accreditation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 406

Open vs. Closed Systems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 408

Open Systems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 408

Closed Systems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 408

A Few Threats to Review . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 409

Maintenance Hooks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 409

Time-of-Check/Time-of-Use Attacks . . . . . . . . . . . . . . . . . . . . 410

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 412

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 413

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 416

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 423

Chapter 5 Physical and Environmental Security . . . . . . . . . . . . . . . . . . . . . . . . 427

Introduction to Physical Security . . . . . . . . . . . . . . . . . . . . . . . . . . . 427

The Planning Process . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 430

Crime Prevention Through Environmental Design . . . . . . . . 435

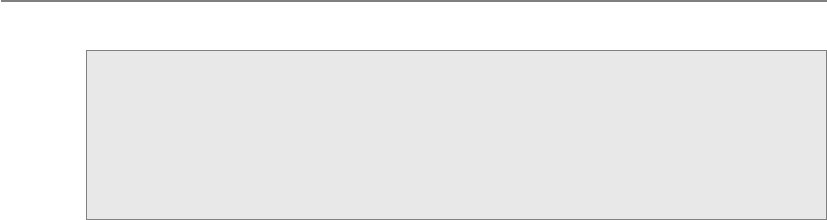

Designing a Physical Security Program . . . . . . . . . . . . . . . . . 442

Protecting Assets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 457

Internal Support Systems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 458

Electric Power . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 459

Environmental Issues . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 465

Ventilation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 467

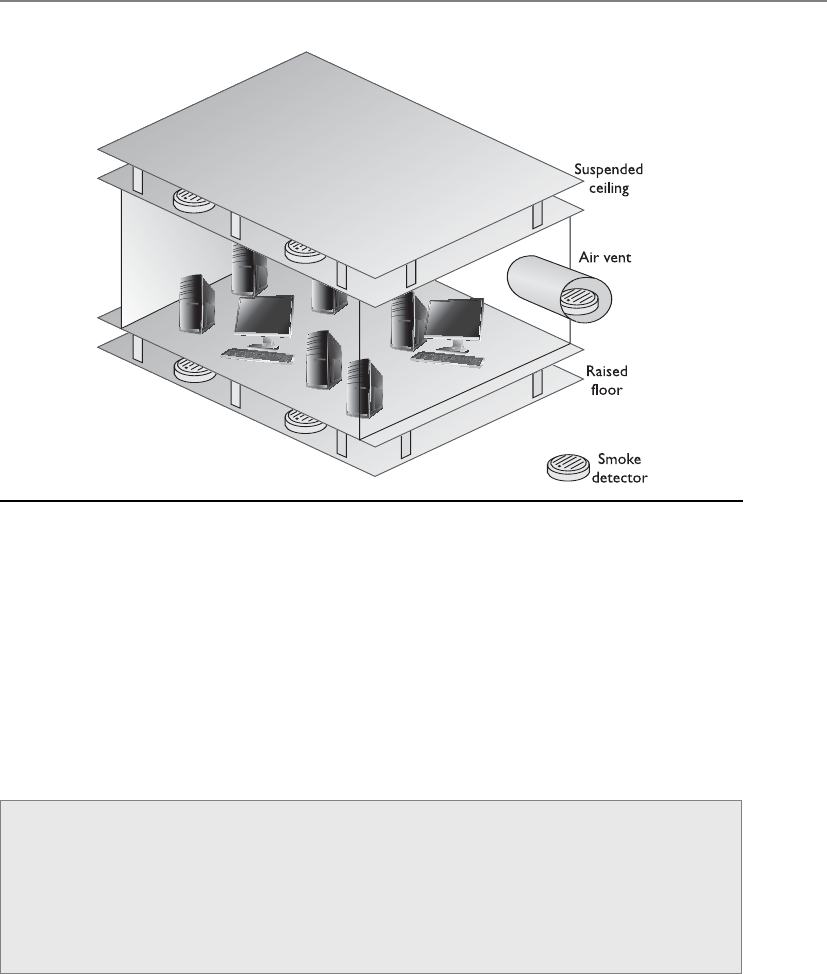

Fire Prevention, Detection, and Suppression . . . . . . . . . . . . . 467

Perimeter Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 475

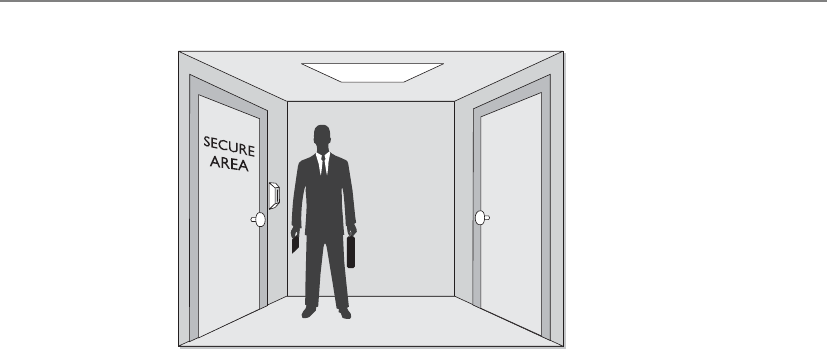

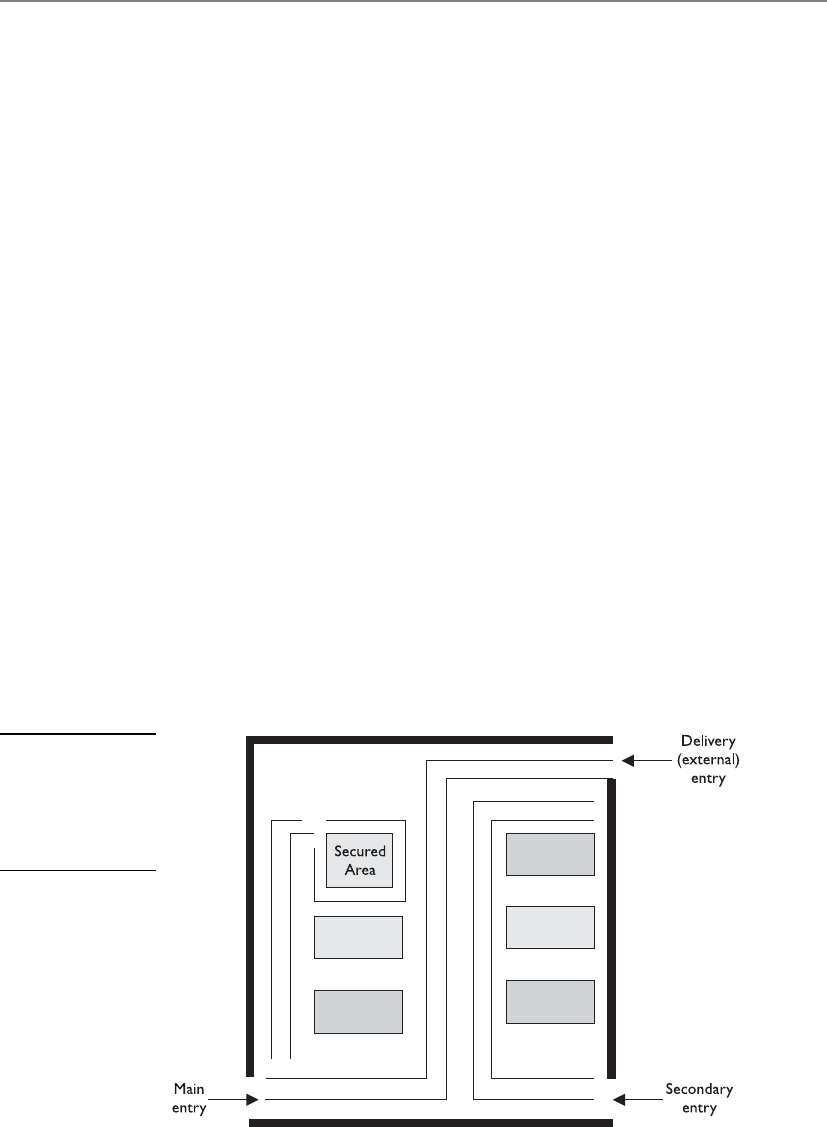

Facility Access Control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 476

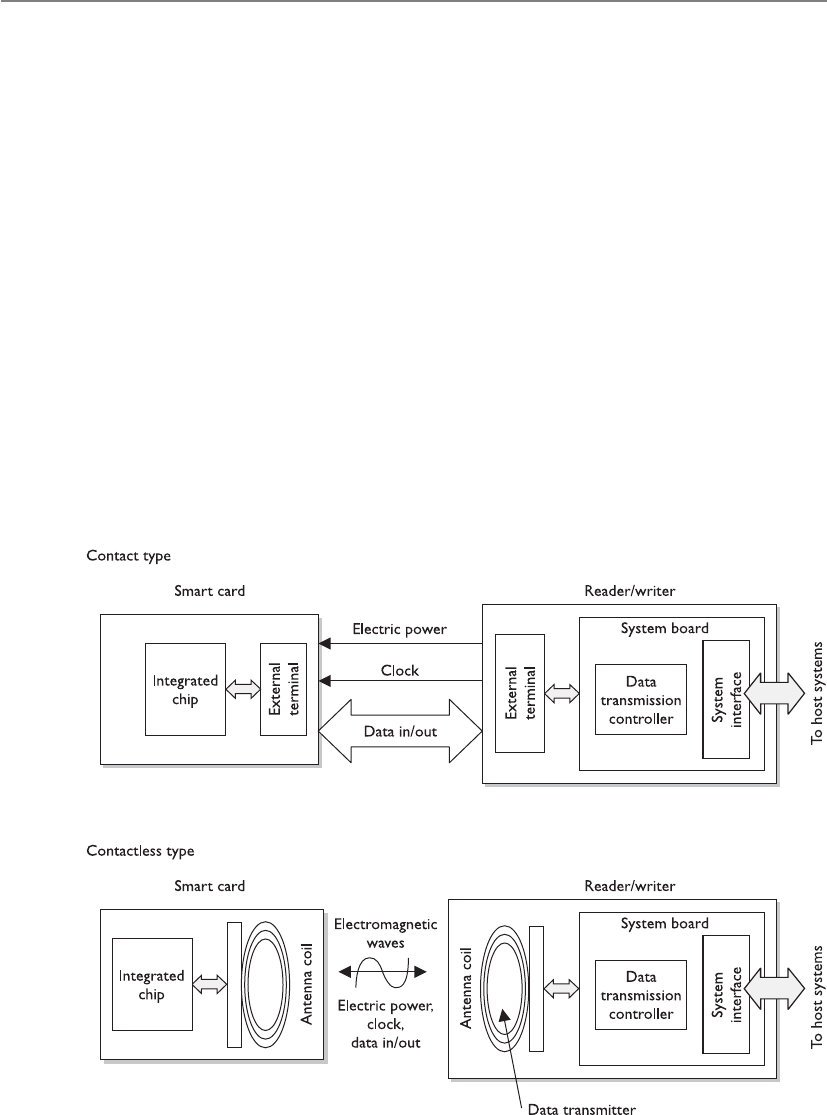

Personnel Access Controls . . . . . . . . . . . . . . . . . . . . . . . . . . . 483

External Boundary Protection Mechanisms . . . . . . . . . . . . . . 484

Intrusion Detection Systems . . . . . . . . . . . . . . . . . . . . . . . . . . 493

Patrol Force and Guards . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 497

Dogs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 497

Auditing Physical Access . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 498

Testing and Drills . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 498

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 499

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 499

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 502

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 509

Chapter 6 Telecommunications and Network Security . . . . . . . . . . . . . . . . . . 515

Telecommunications . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51 7

Open Systems Interconnection Reference Model . . . . . . . . . . . . . . . 517

Protocol . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 518

Application Layer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 521

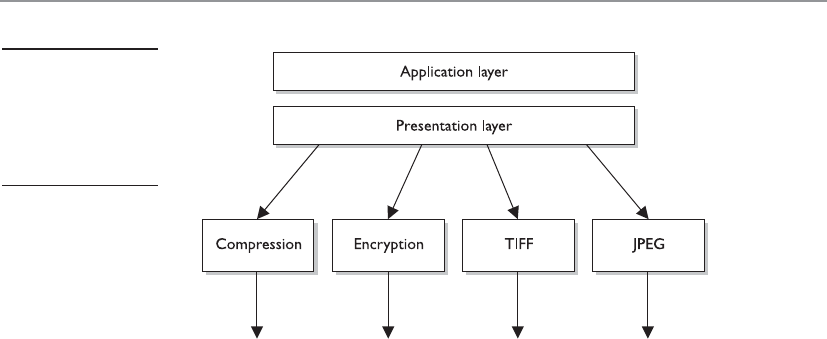

Presentation Layer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 522

Session Layer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 523

CISSP All-in-One Exam Guide

xii

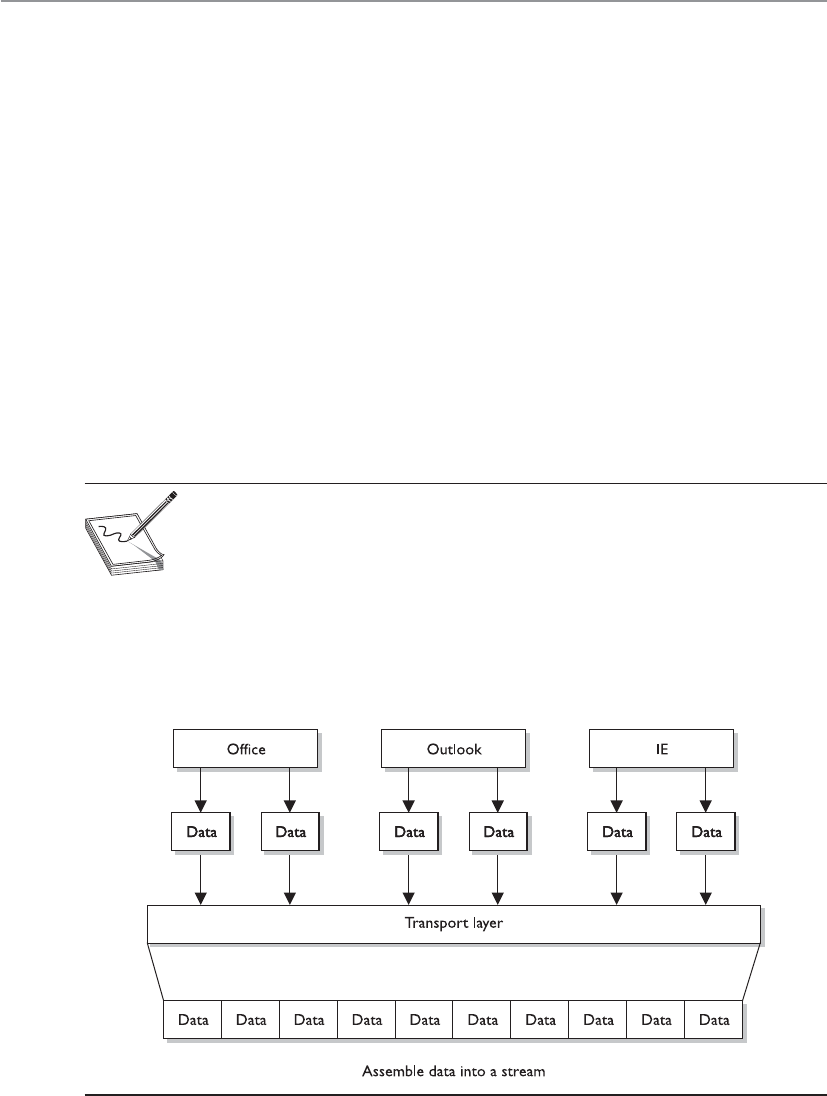

Transport Layer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 525

Network Layer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 527

Data Link Layer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 528

Physical Layer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 530

Functions and Protocols in the OSI Model . . . . . . . . . . . . . . 530

Tying the Layers Together . . . . . . . . . . . . . . . . . . . . . . . . . . . . 532

TCP/IP Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 534

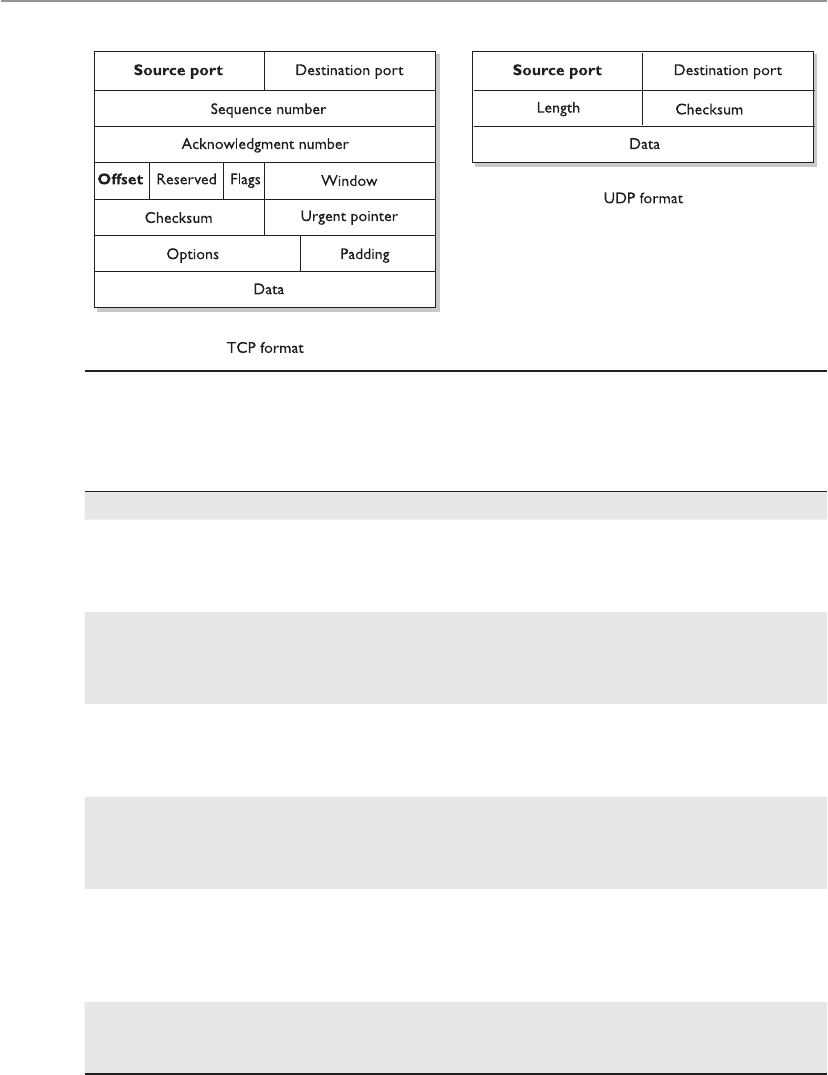

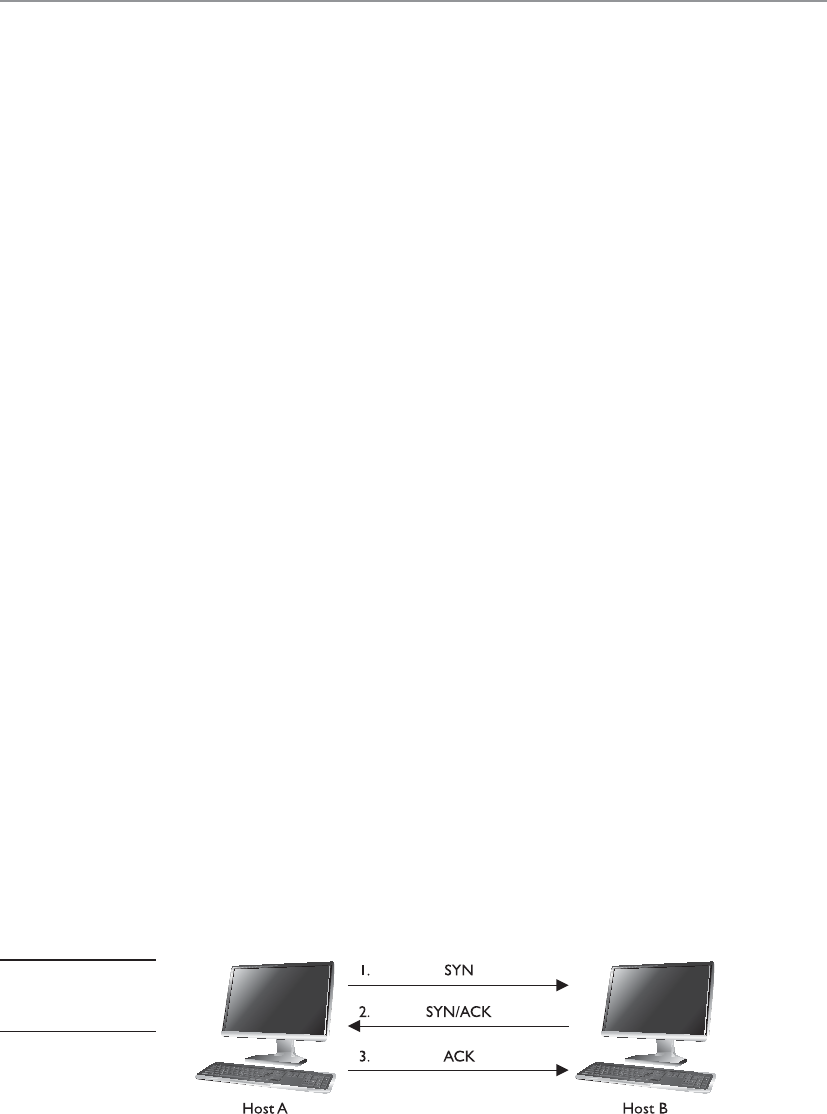

TCP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 535

IP Addressing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 541

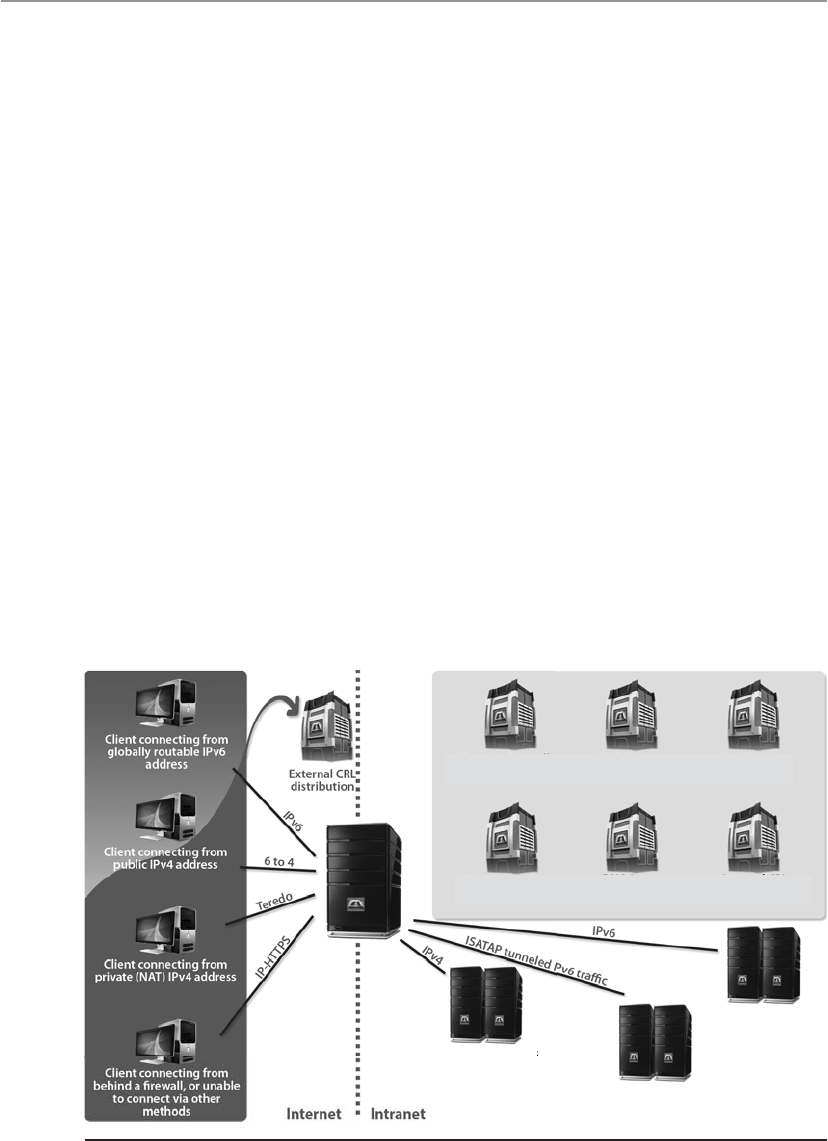

IPv6 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 544

Layer 2 Security Standards . . . . . . . . . . . . . . . . . . . . . . . . . . . 547

Types of Transmission . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 550

Analog and Digital . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 550

Asynchronous and Synchronous . . . . . . . . . . . . . . . . . . . . . . 552

Broadband and Baseband . . . . . . . . . . . . . . . . . . . . . . . . . . . . 554

Cabling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 556

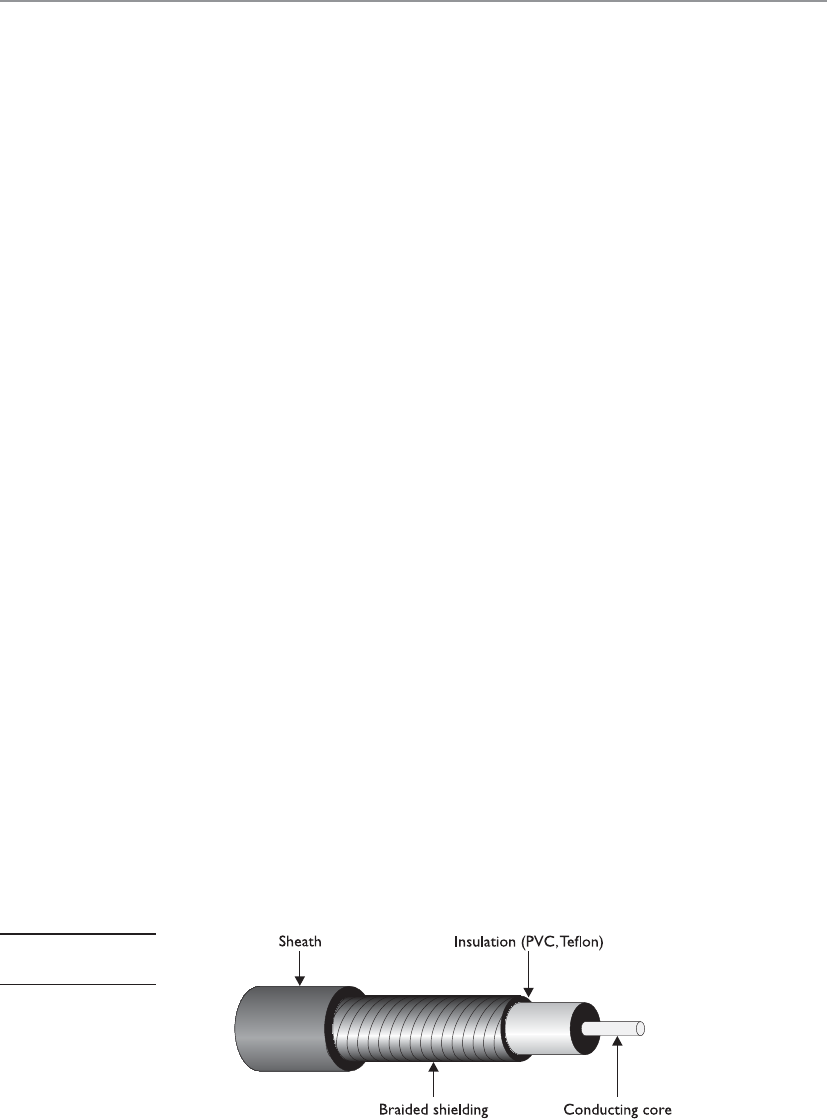

Coaxial Cable . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 557

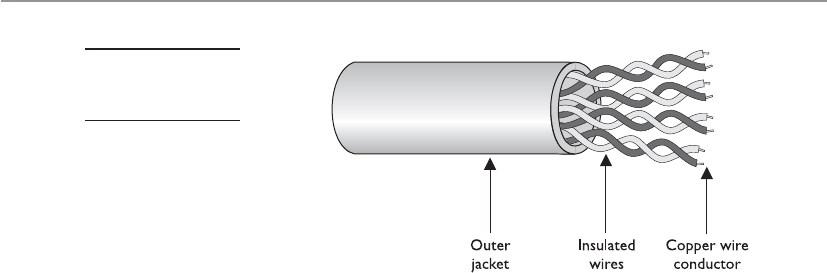

Twisted-Pair Cable . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 557

Fiber-Optic Cable . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 558

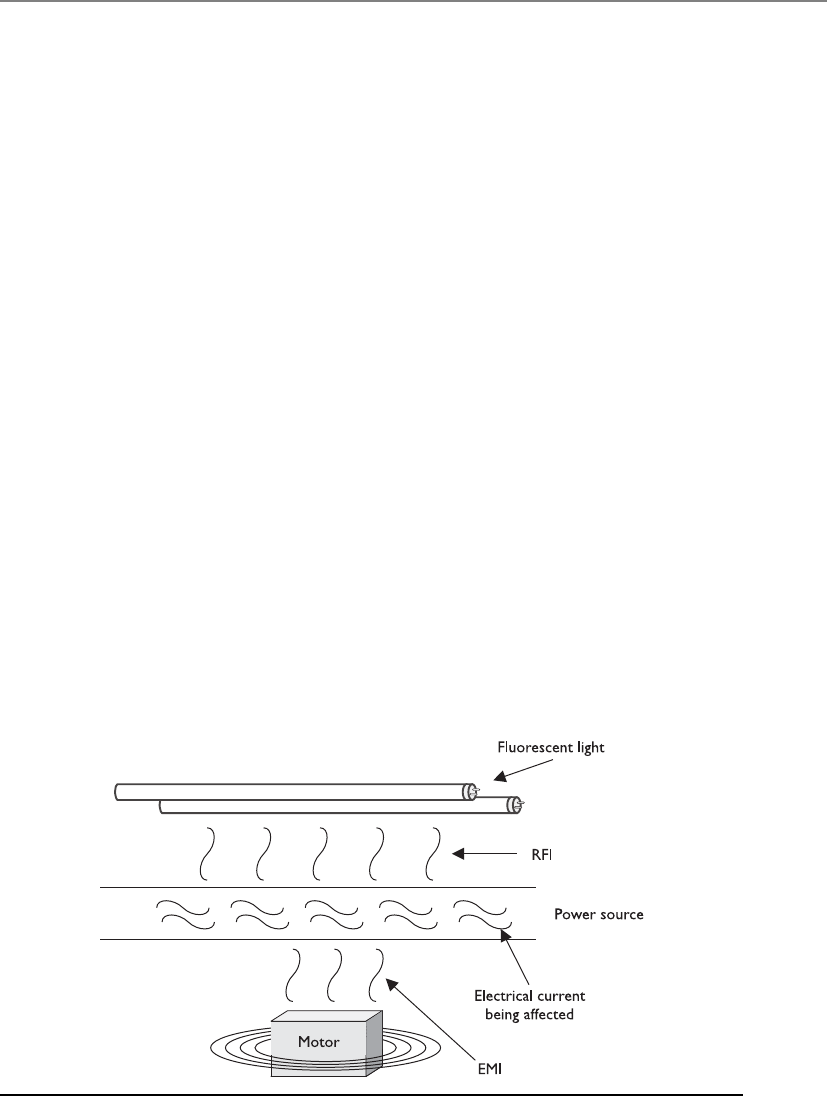

Cabling Problems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 560

Networking Foundations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 562

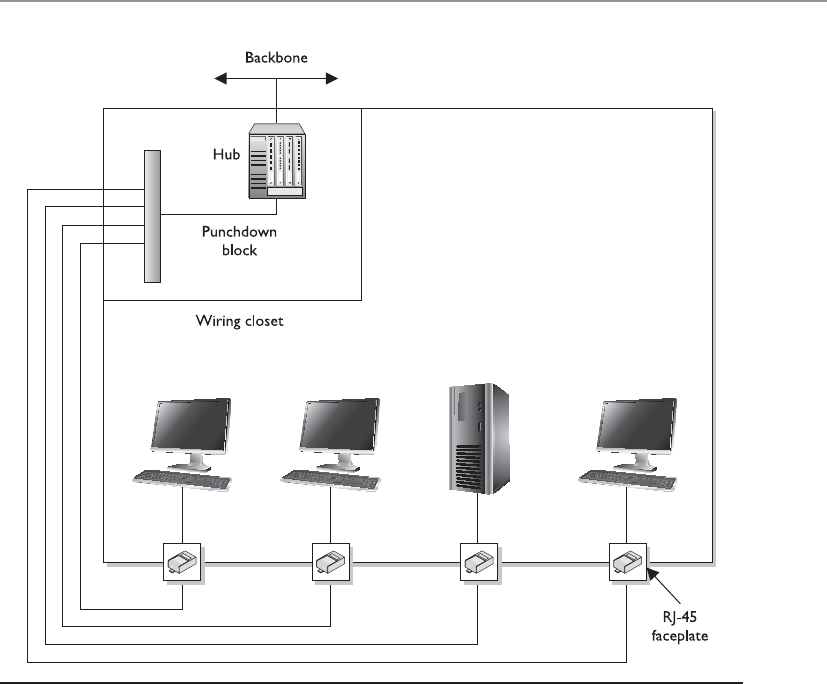

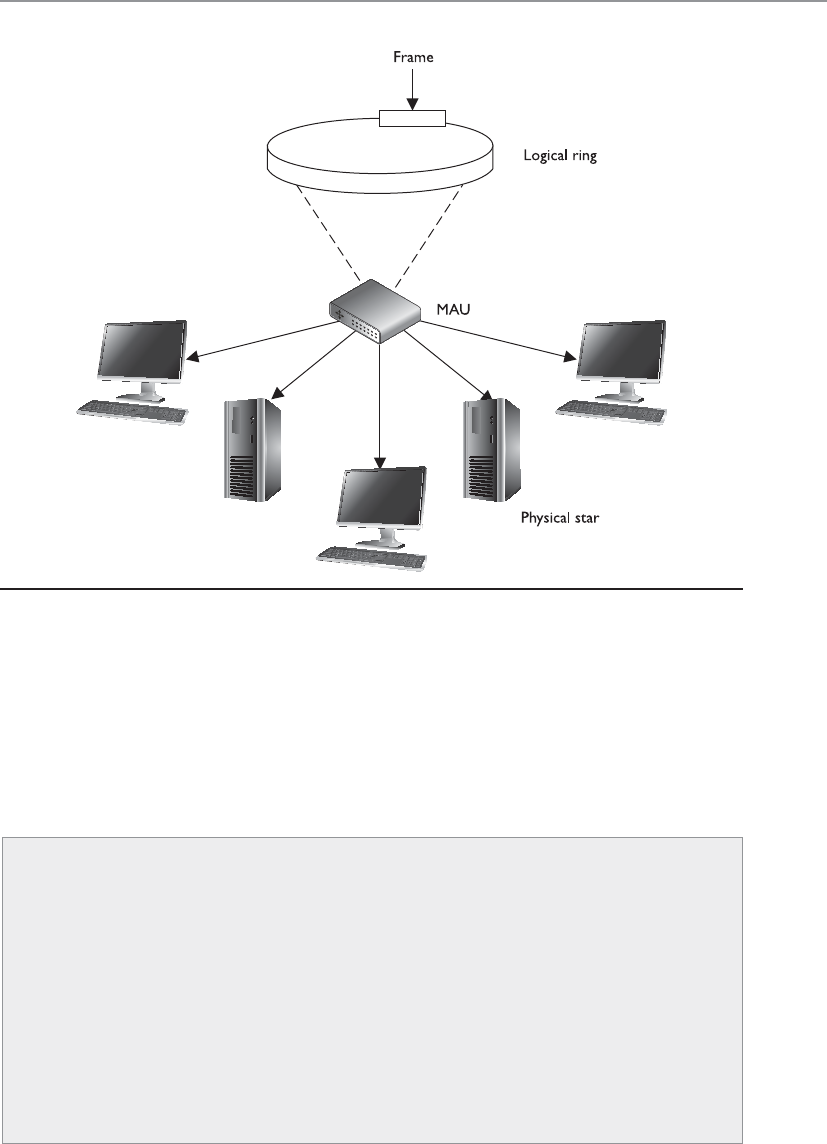

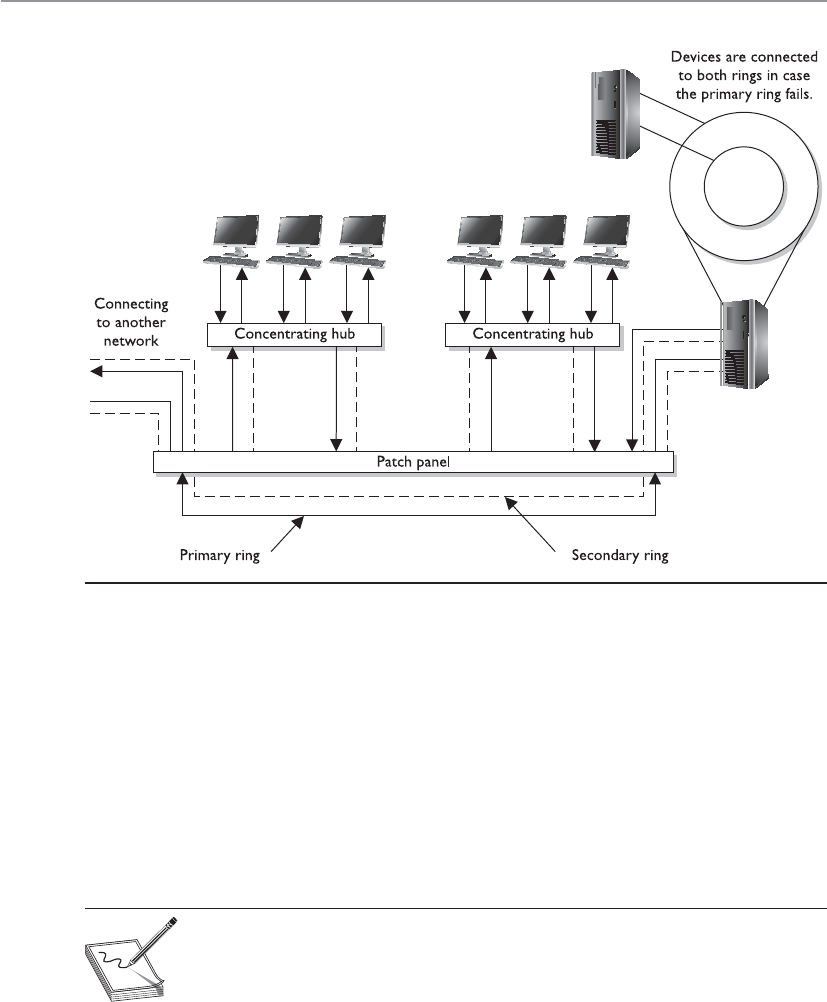

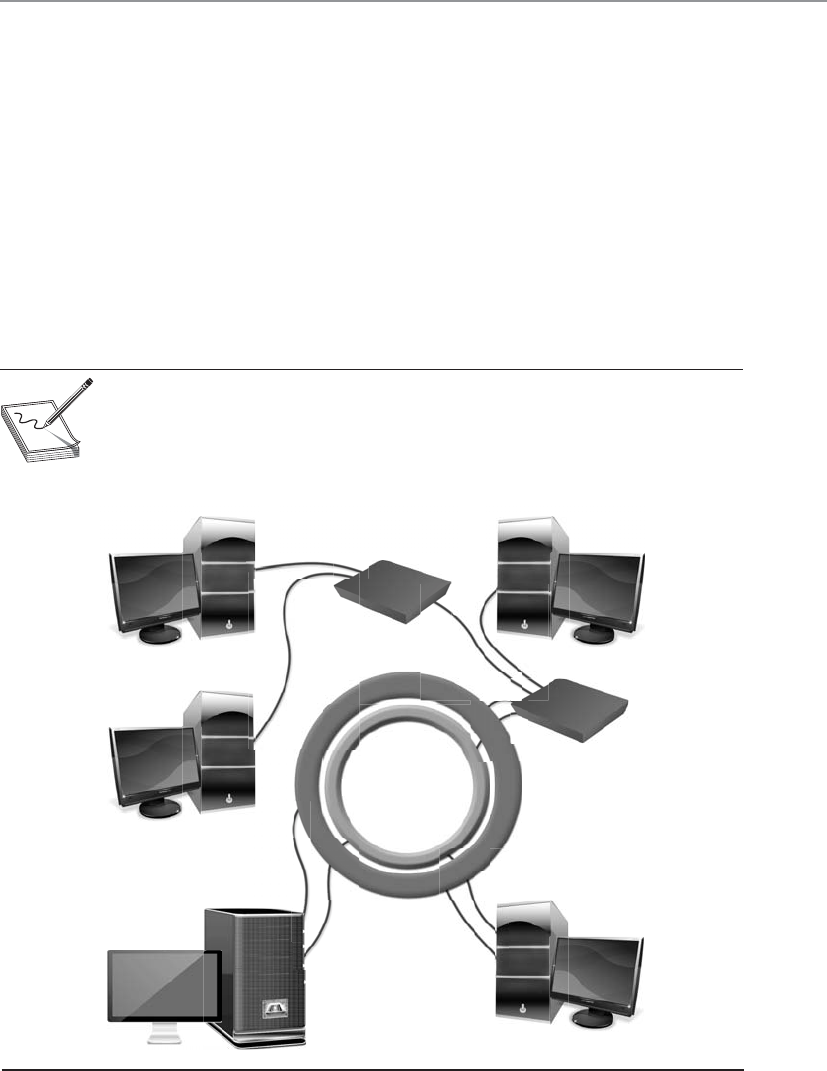

Network Topology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 563

Media Access Technologies . . . . . . . . . . . . . . . . . . . . . . . . . . . 565

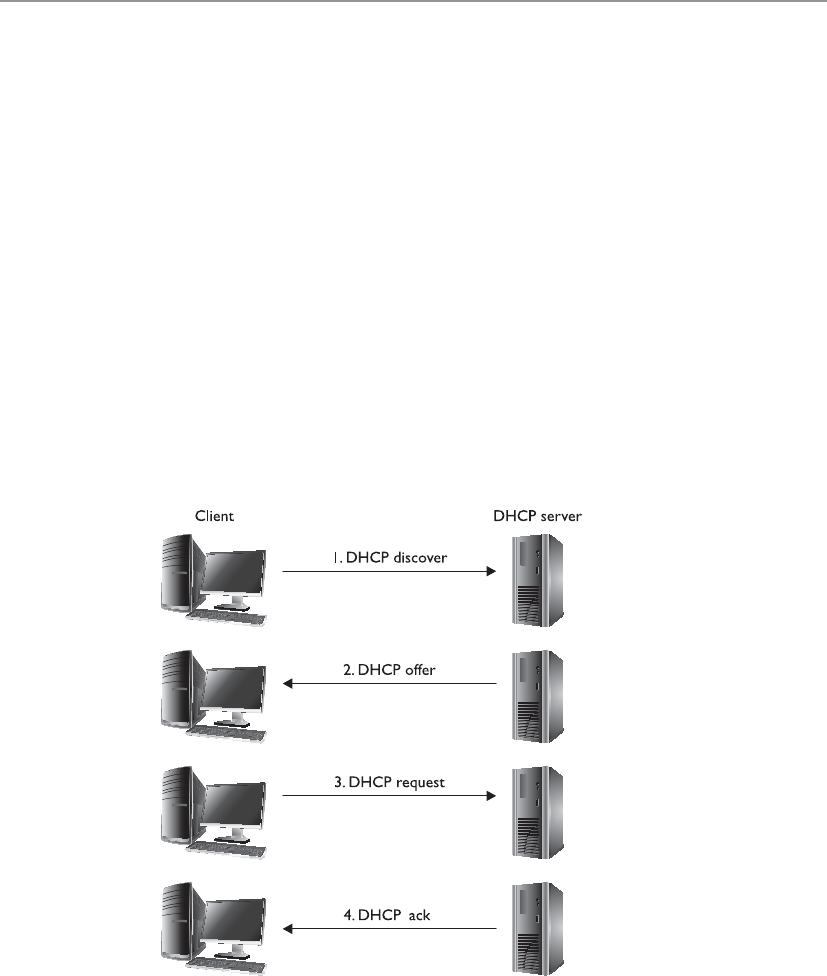

Network Protocols and Services . . . . . . . . . . . . . . . . . . . . . . . 580

Domain Name Service . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 590

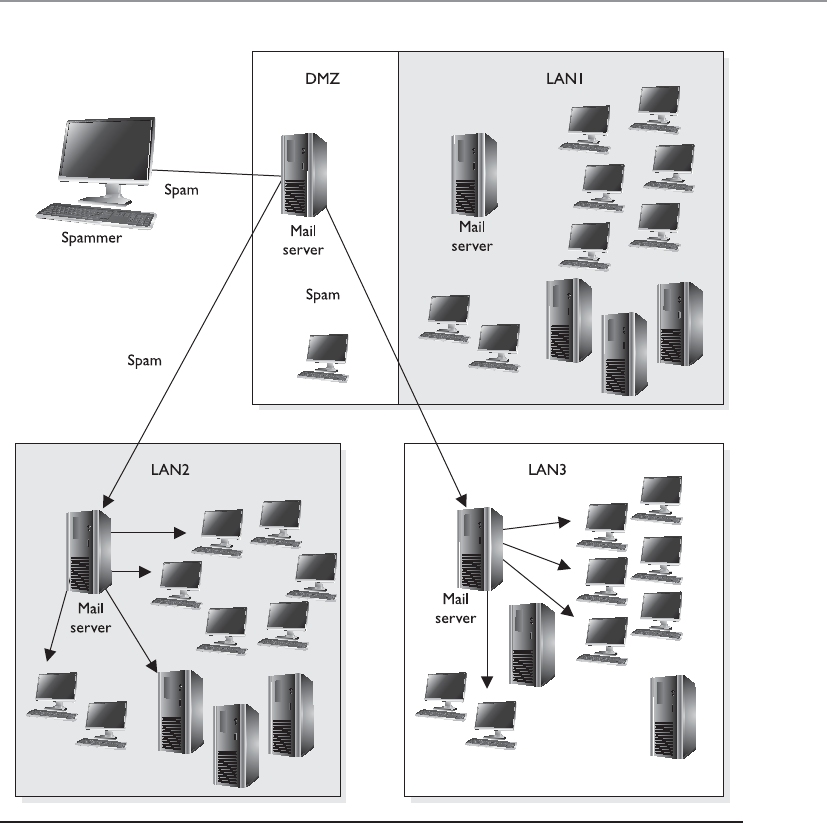

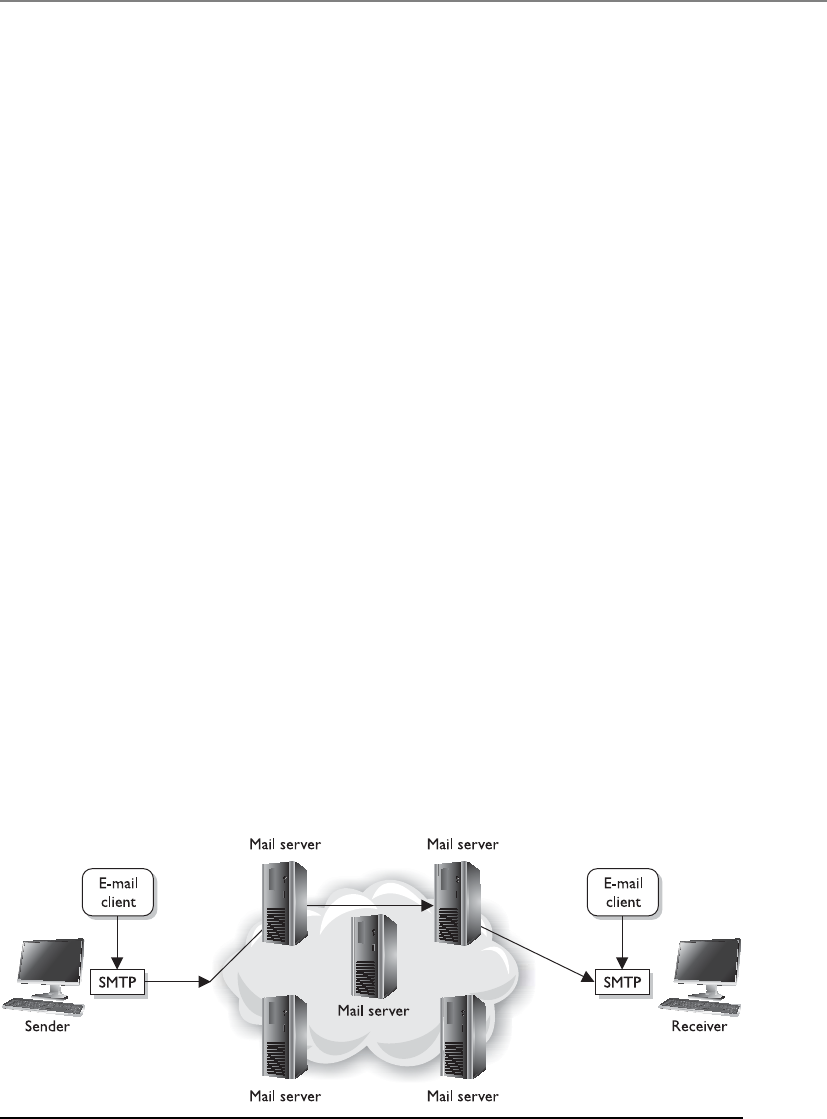

E-mail Services . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 599

Network Address Translation . . . . . . . . . . . . . . . . . . . . . . . . . 604

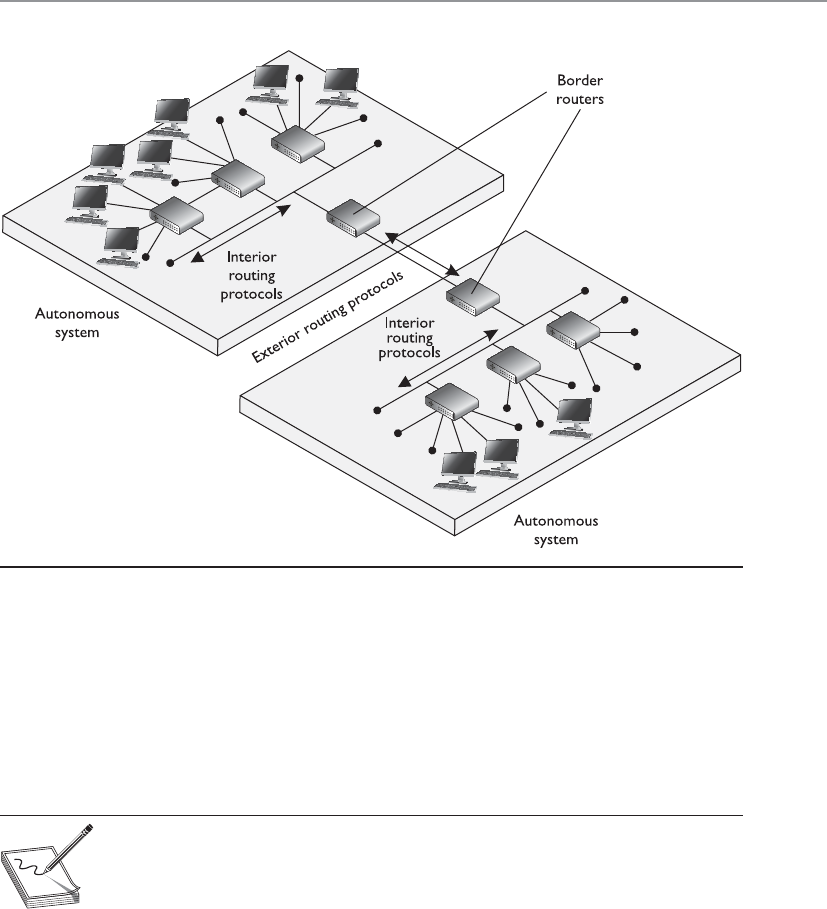

Routing Protocols . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 608

Networking Devices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 612

Repeaters . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 612

Bridges . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 613

Routers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 615

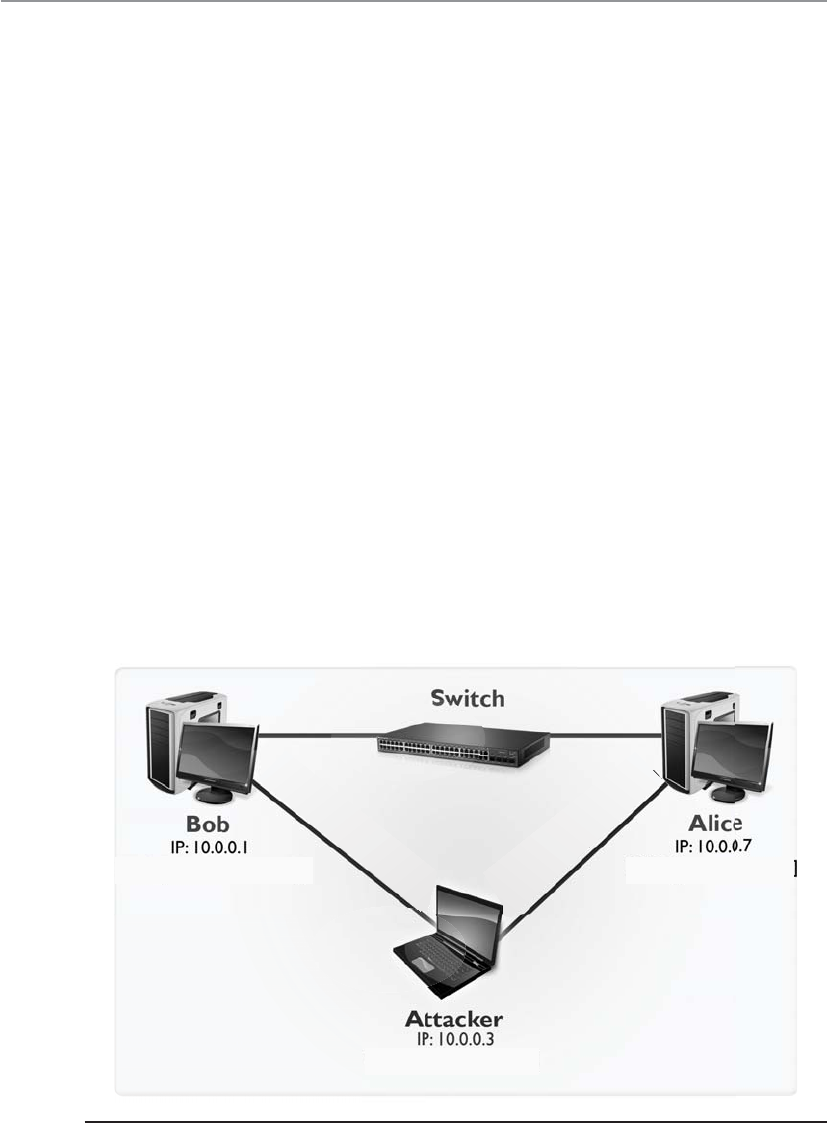

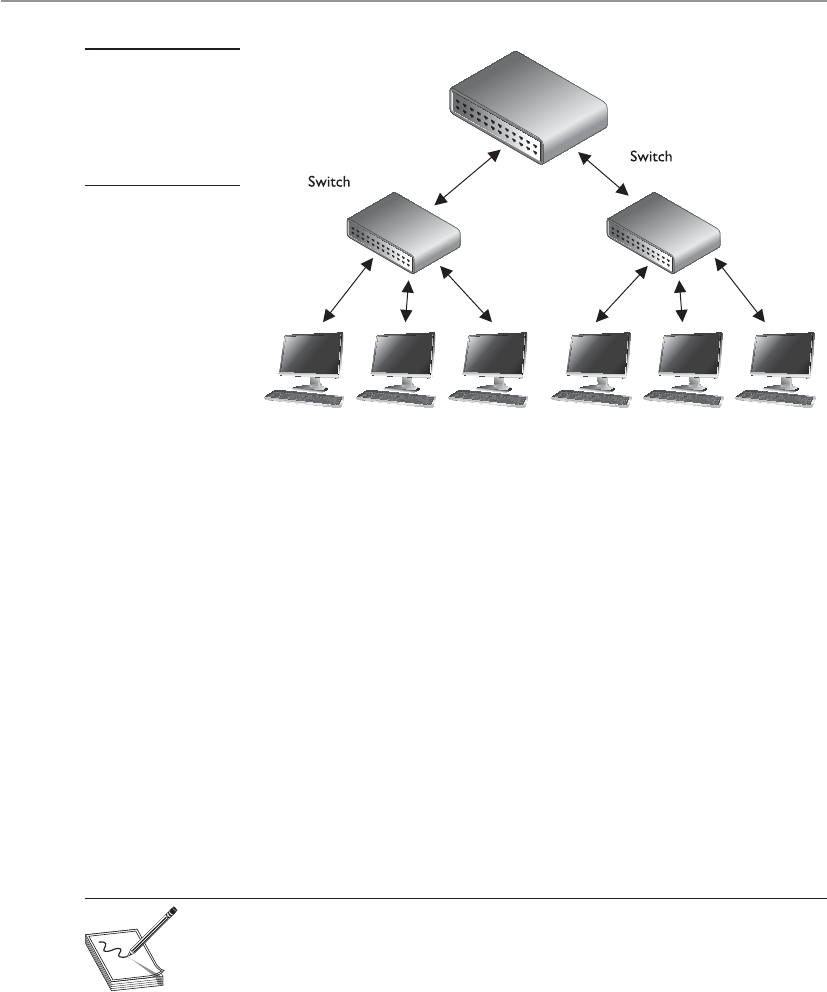

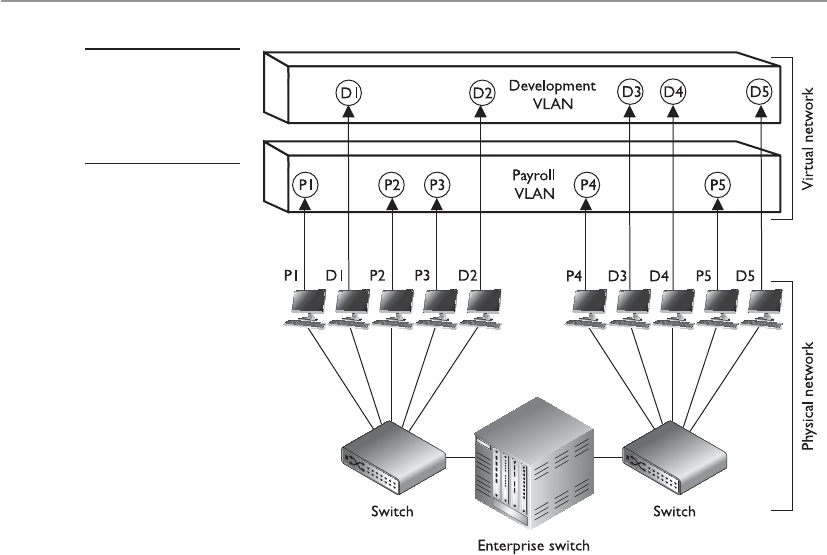

Switches . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 617

Gateways . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 621

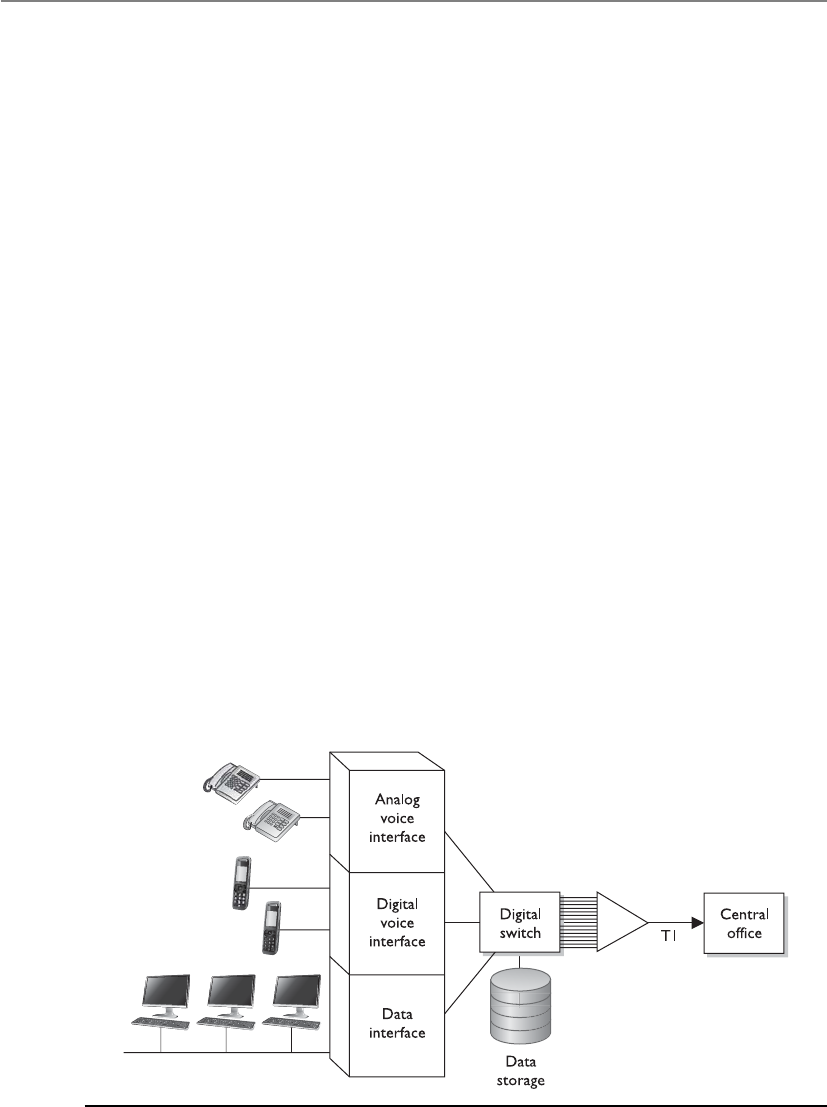

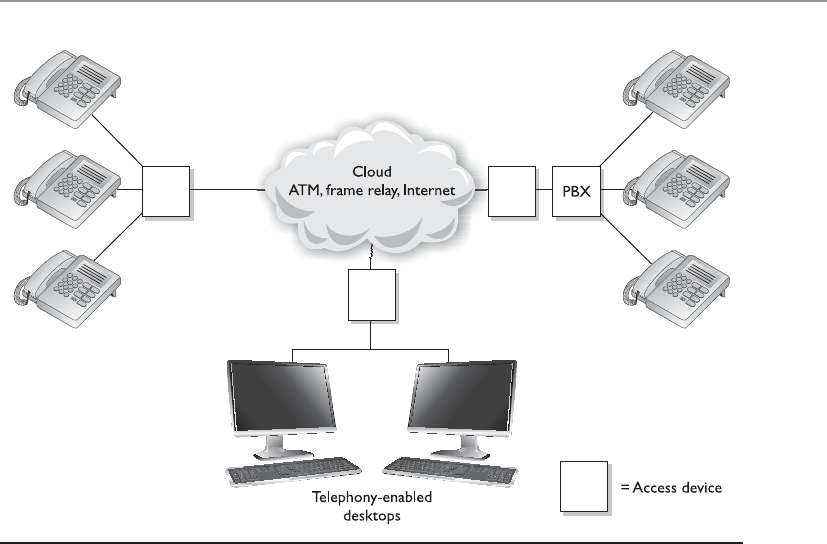

PBXs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 624

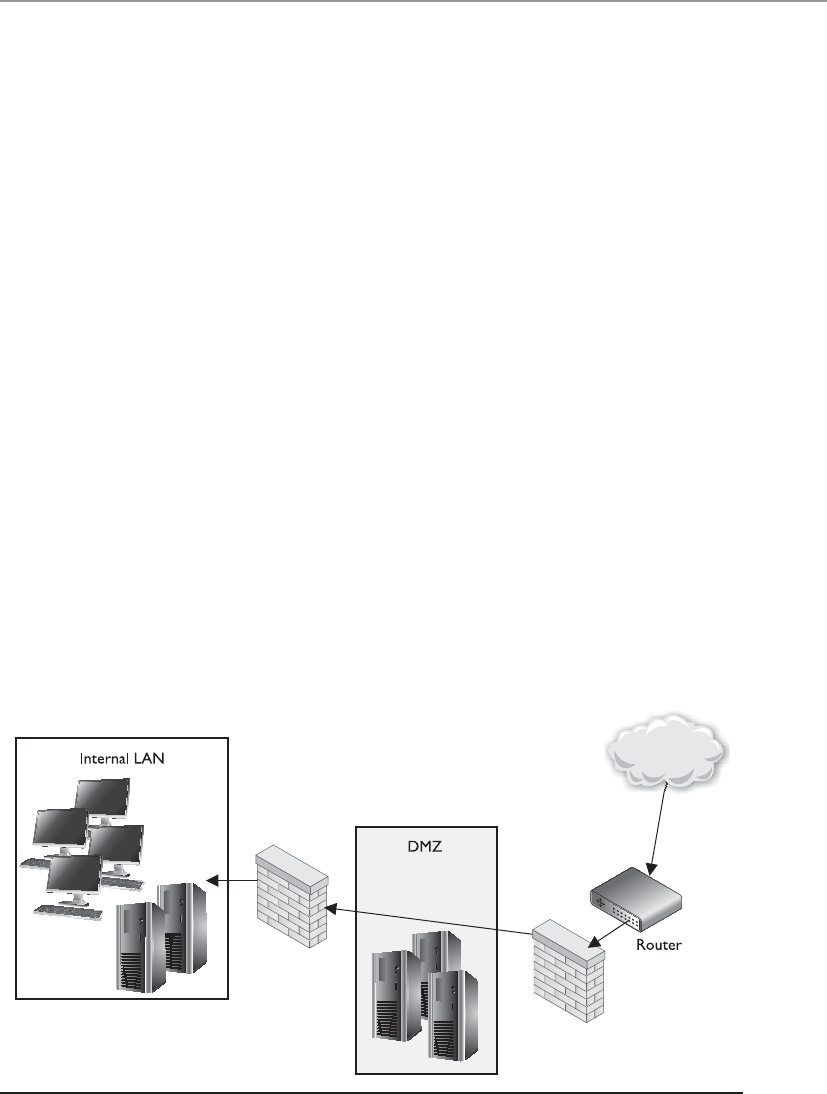

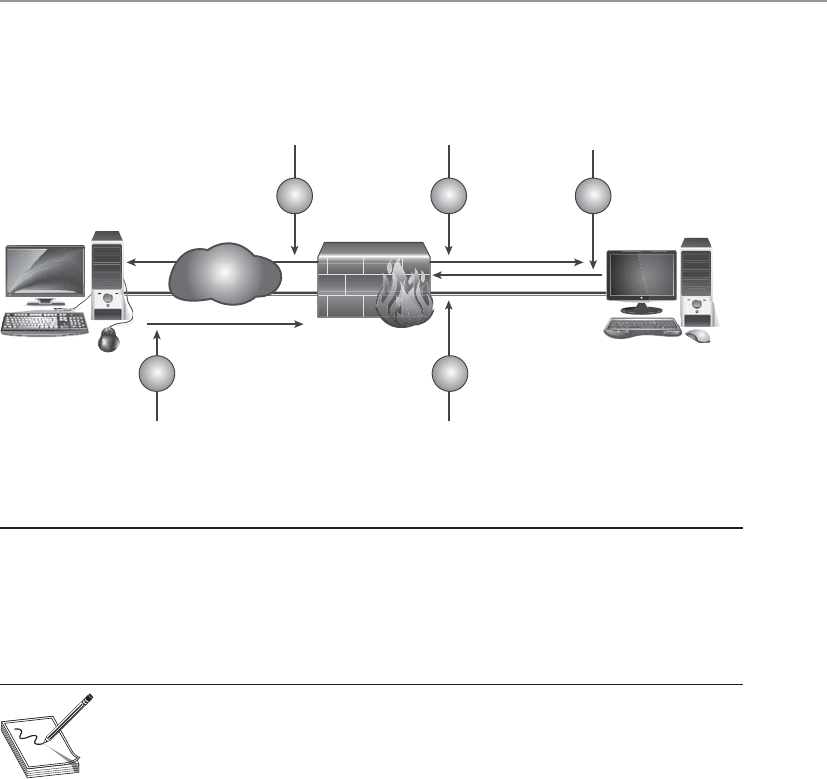

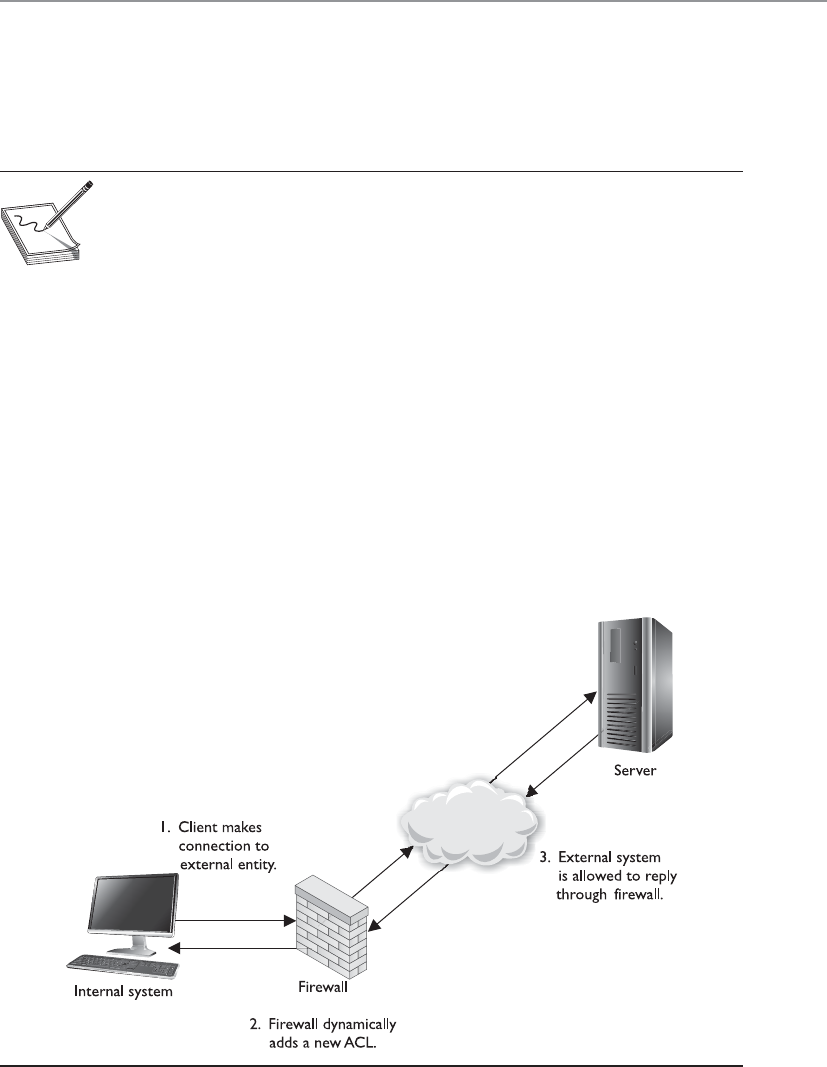

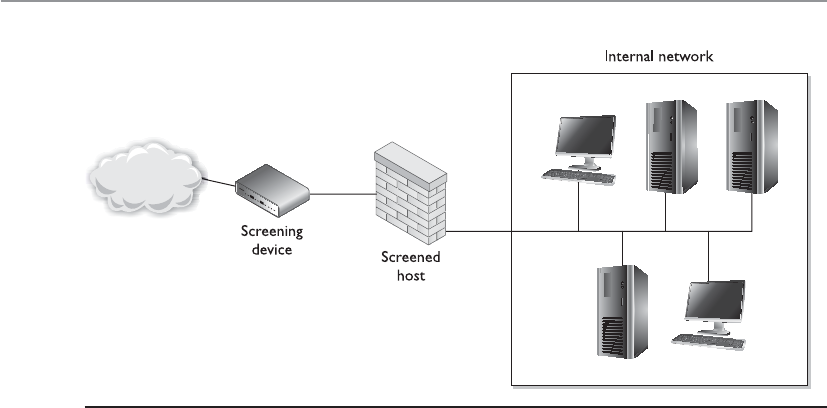

Firewalls . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 628

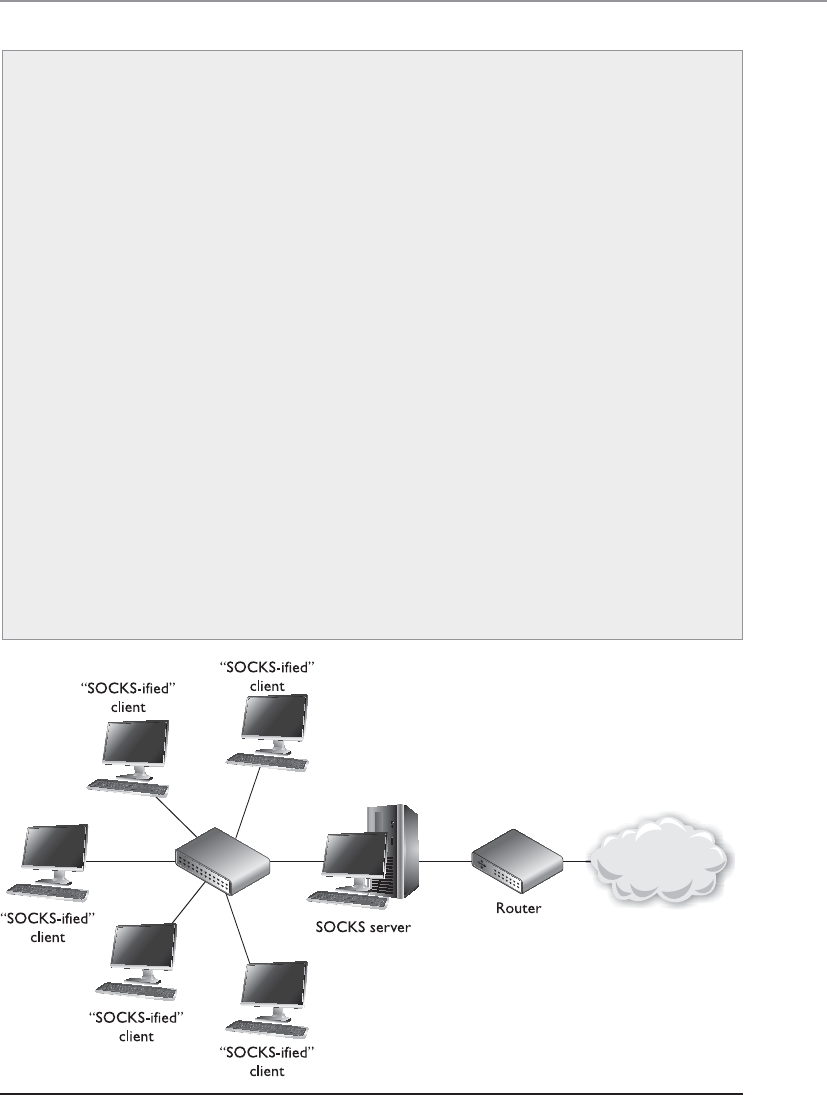

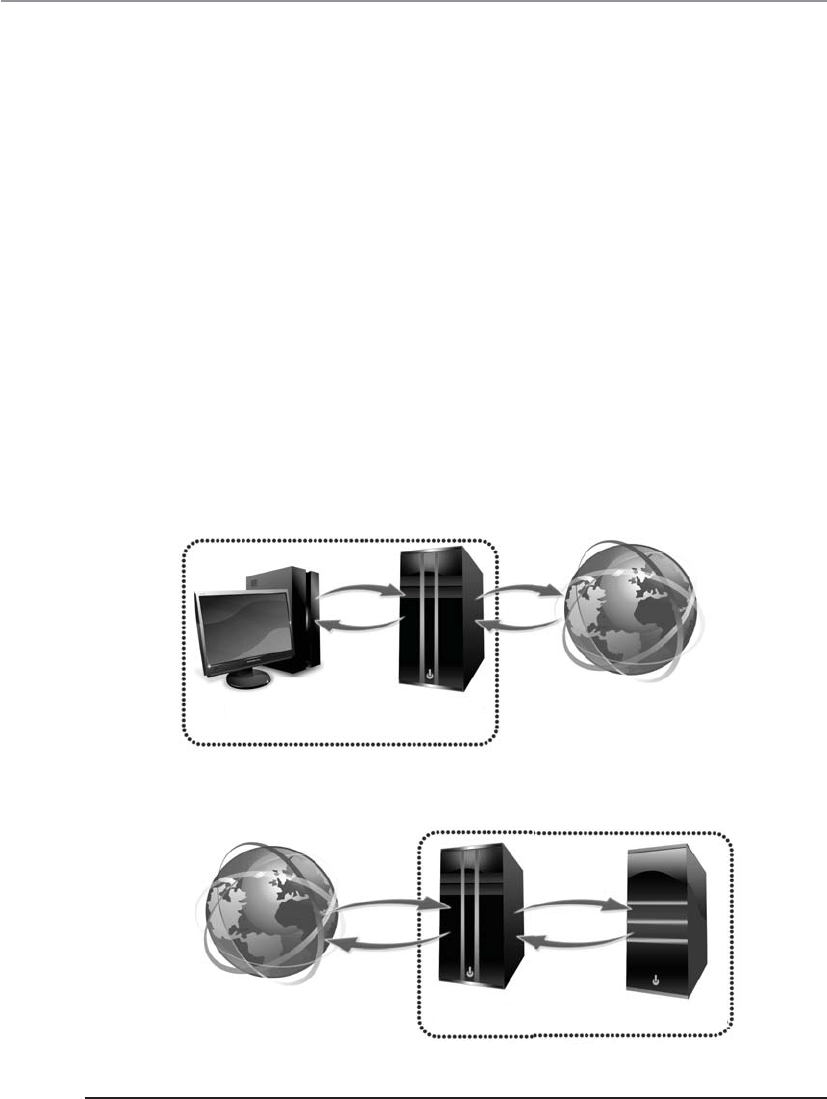

Proxy Servers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 653

Honeypot . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 655

Unified Threat Management . . . . . . . . . . . . . . . . . . . . . . . . . . 656

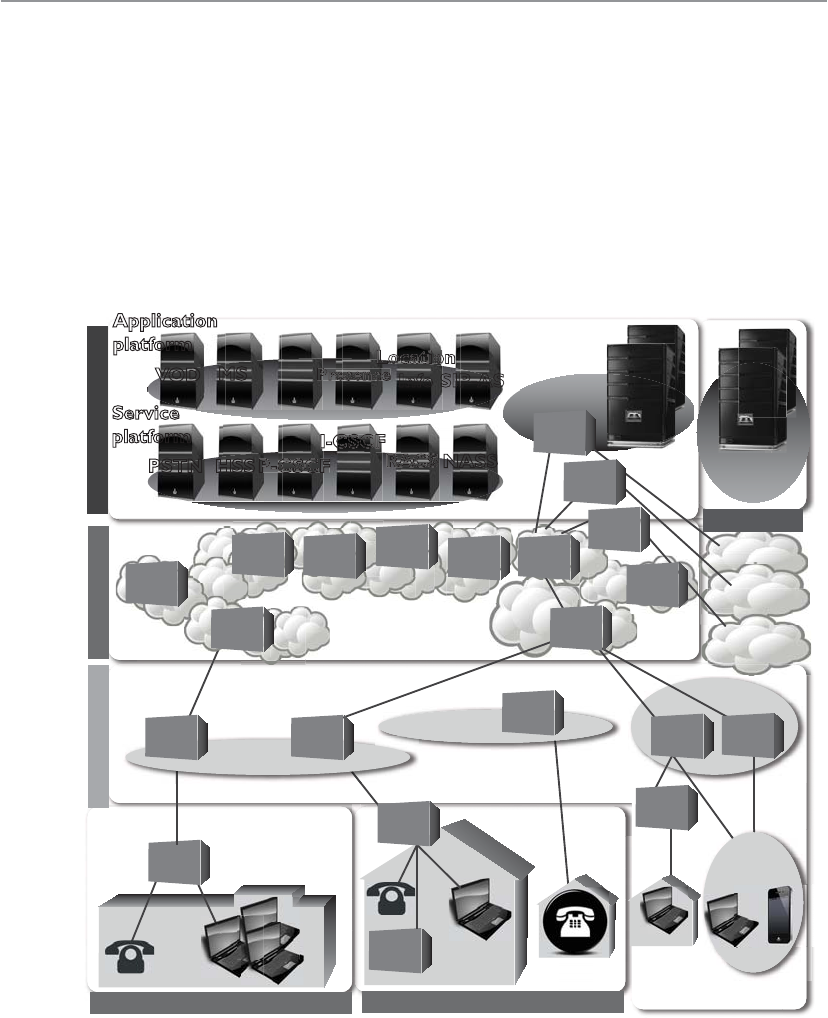

Cloud Computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 657

Intranets and Extranets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 660

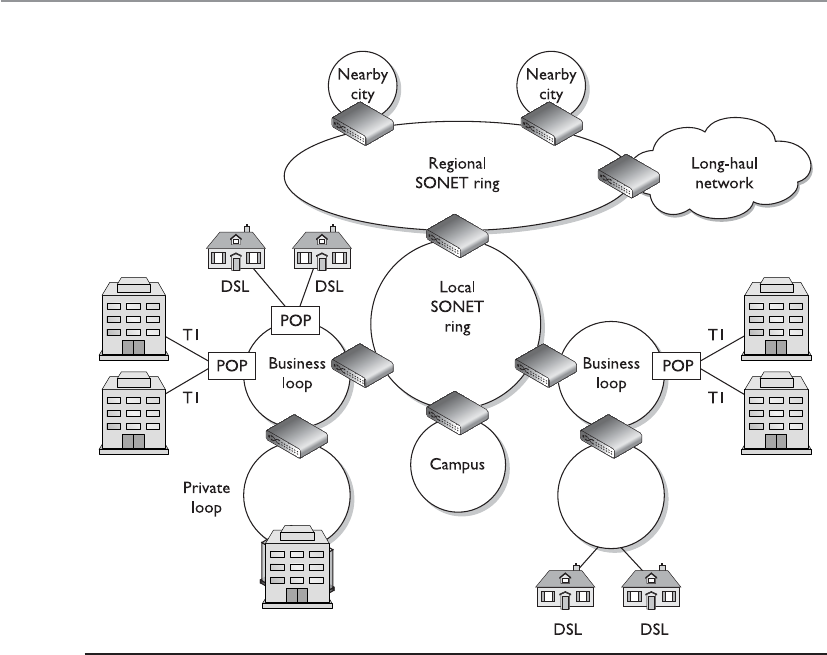

Metropolitan Area Networks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 663

Wide Area Networks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 665

Telecommunications Evolution . . . . . . . . . . . . . . . . . . . . . . . 666

Dedicated Links . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 669

WAN Technologies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 673

Contents

xiii

Remote Connectivity . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 695

Dial-up Connections . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 695

ISDN . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 697

DSL . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 698

Cable Modems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 700

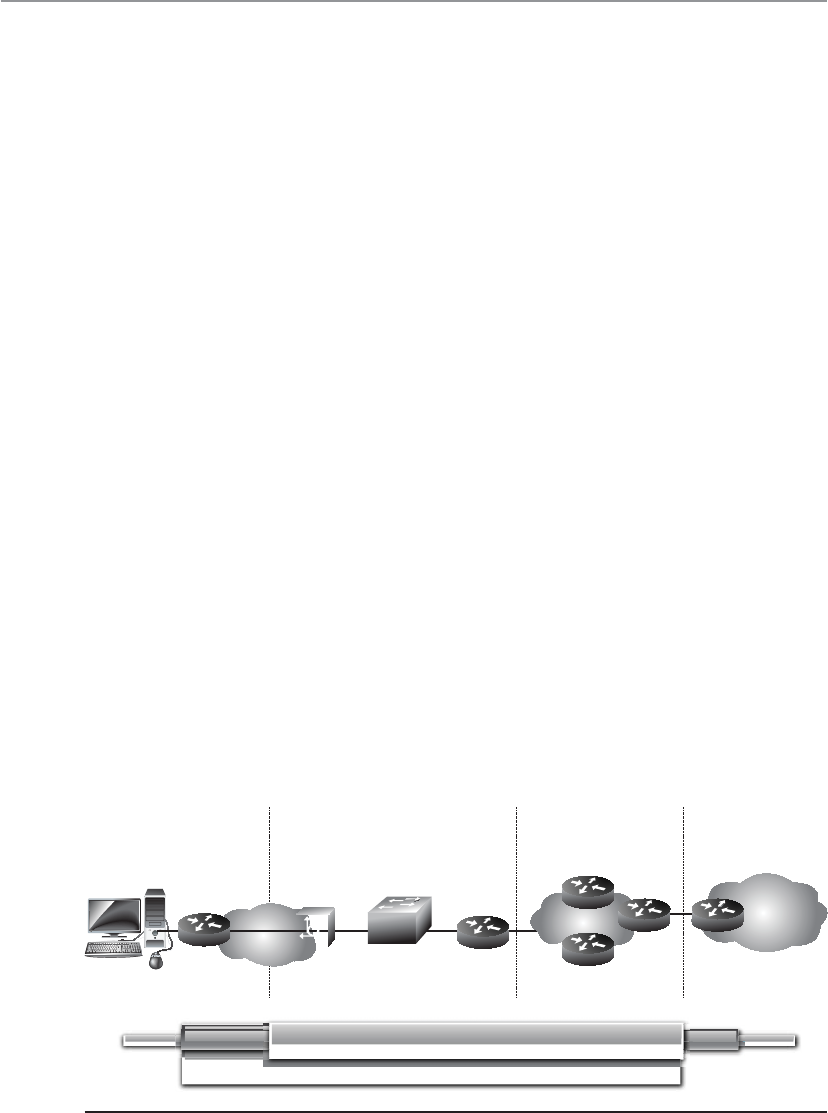

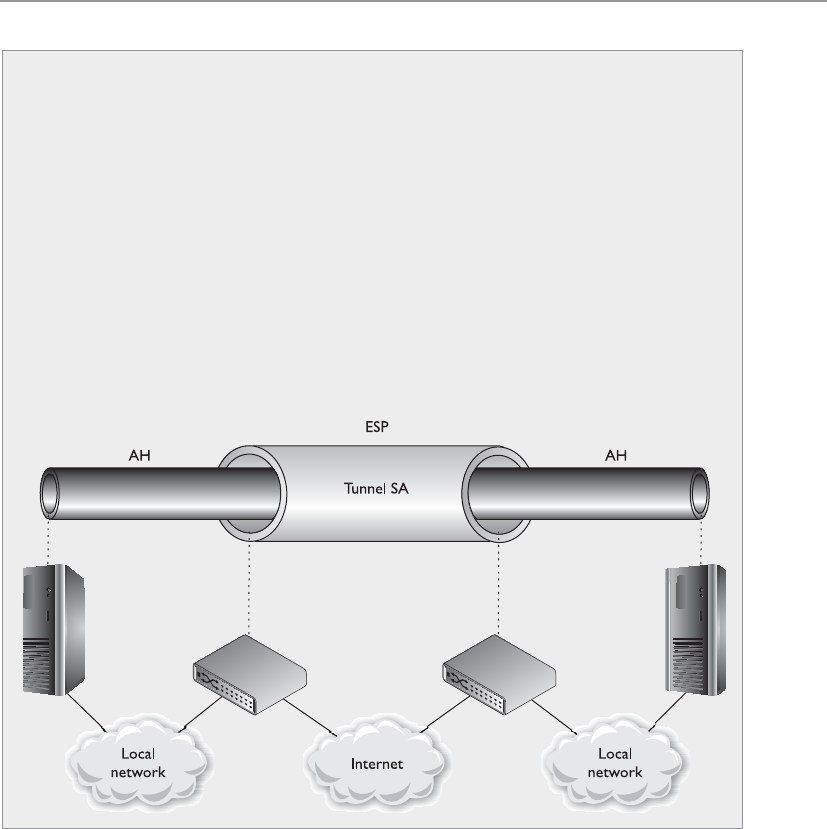

VPN . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 702

Authentication Protocols . . . . . . . . . . . . . . . . . . . . . . . . . . . . 709

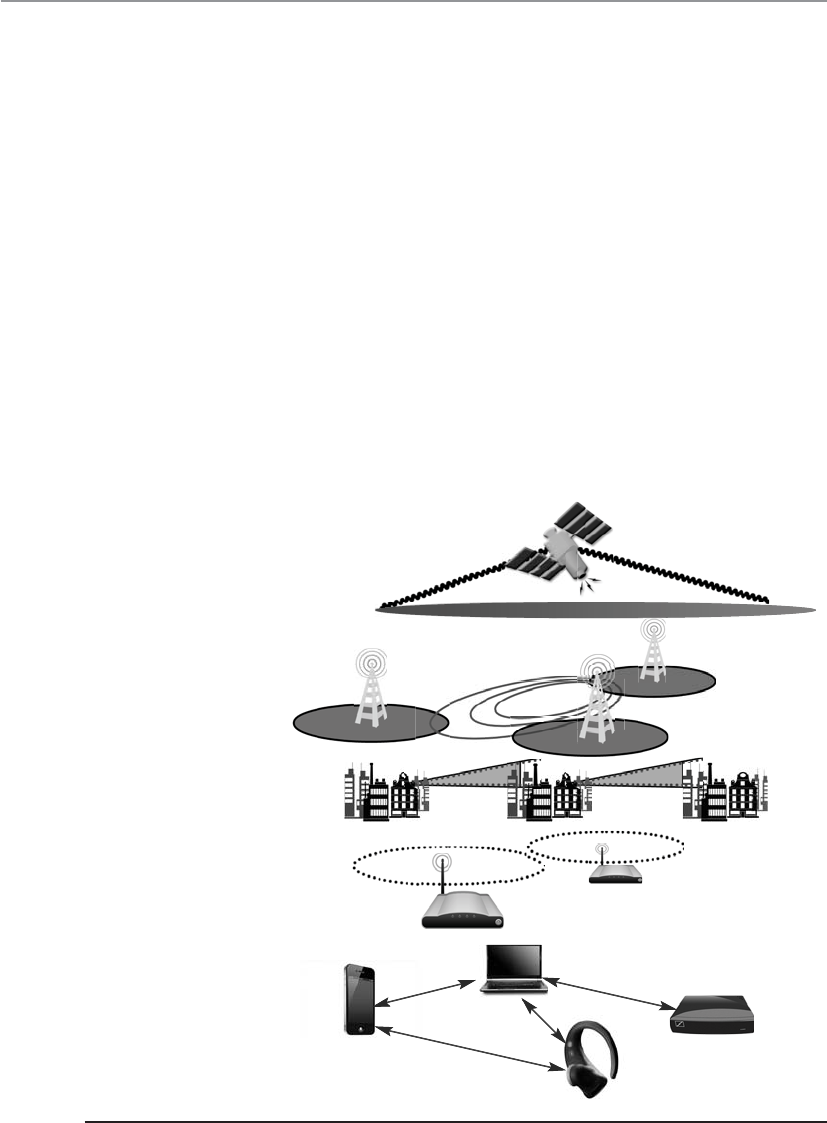

Wireless Technologies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 712

Wireless Communications . . . . . . . . . . . . . . . . . . . . . . . . . . . 712

WLAN Components . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 716

Wireless Standards . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 723

War Driving for WLANs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 728

Satellites . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 729

Mobile Wireless Communication . . . . . . . . . . . . . . . . . . . . . . 730

Mobile Phone Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 736

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 739

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 740

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 744

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 753

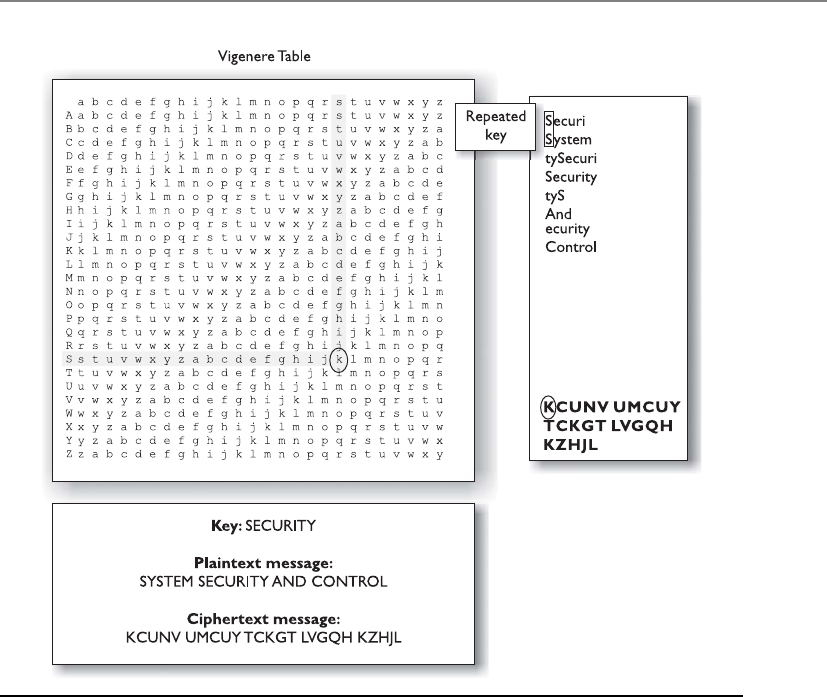

Chapter 7 Cryptography . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 759

The History of Cryptography . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 760

Cryptography Definitions and Concepts . . . . . . . . . . . . . . . . . . . . . 765

Kerckhoffs’ Principle . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 767

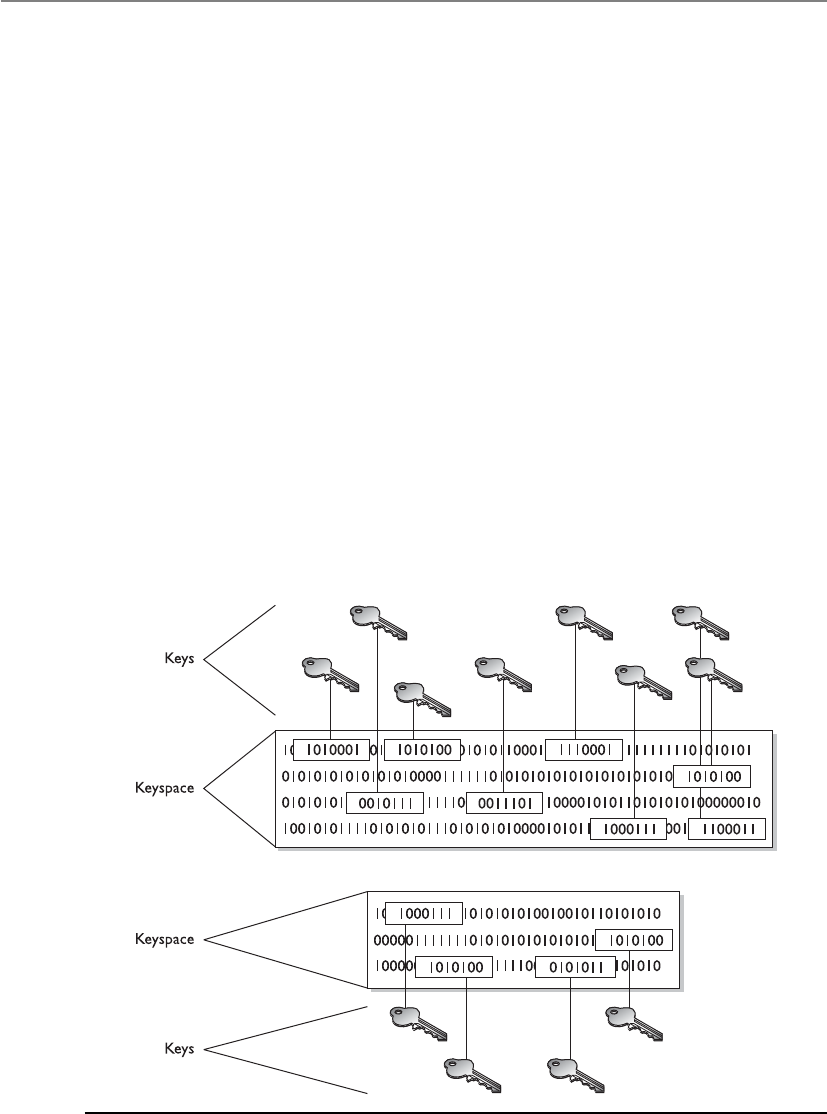

The Strength of the Cryptosystem . . . . . . . . . . . . . . . . . . . . . . 768

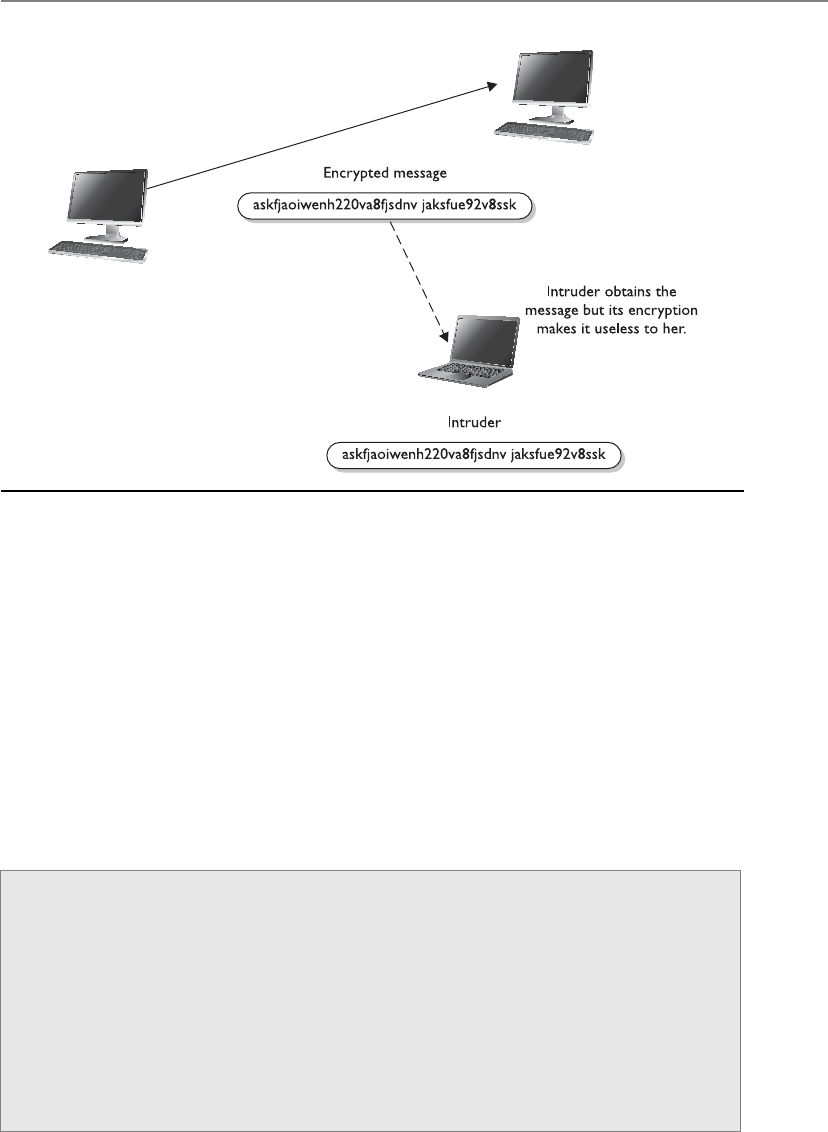

Services of Cryptosystems . . . . . . . . . . . . . . . . . . . . . . . . . . . . 769

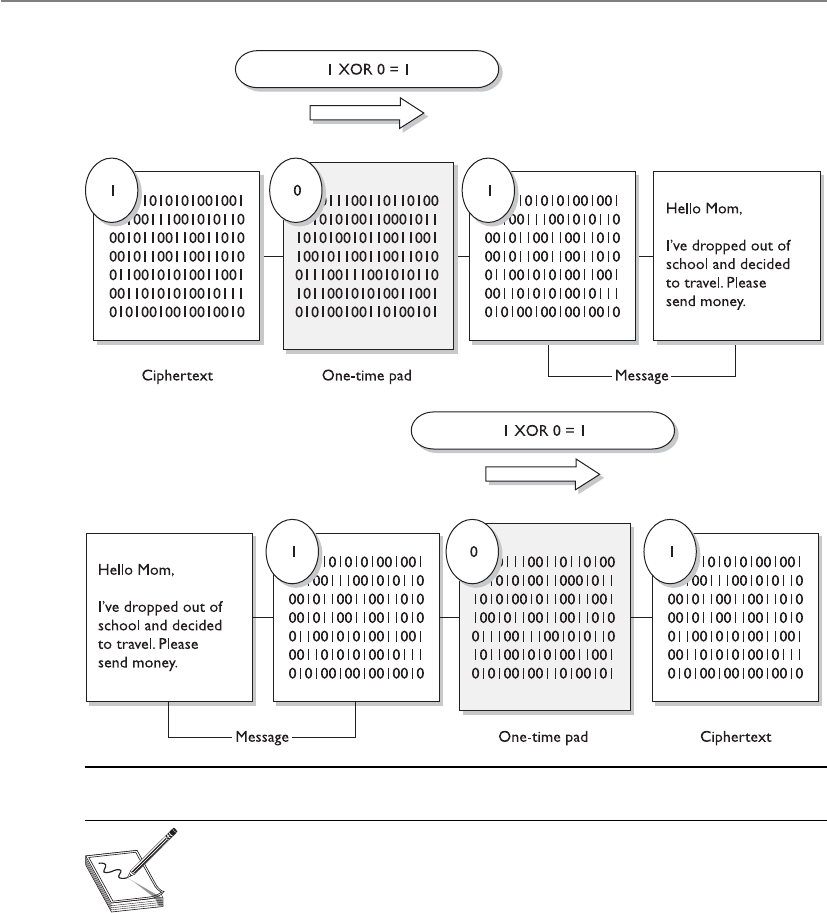

One-Time Pad . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 771

Running and Concealment Ciphers . . . . . . . . . . . . . . . . . . . . 773

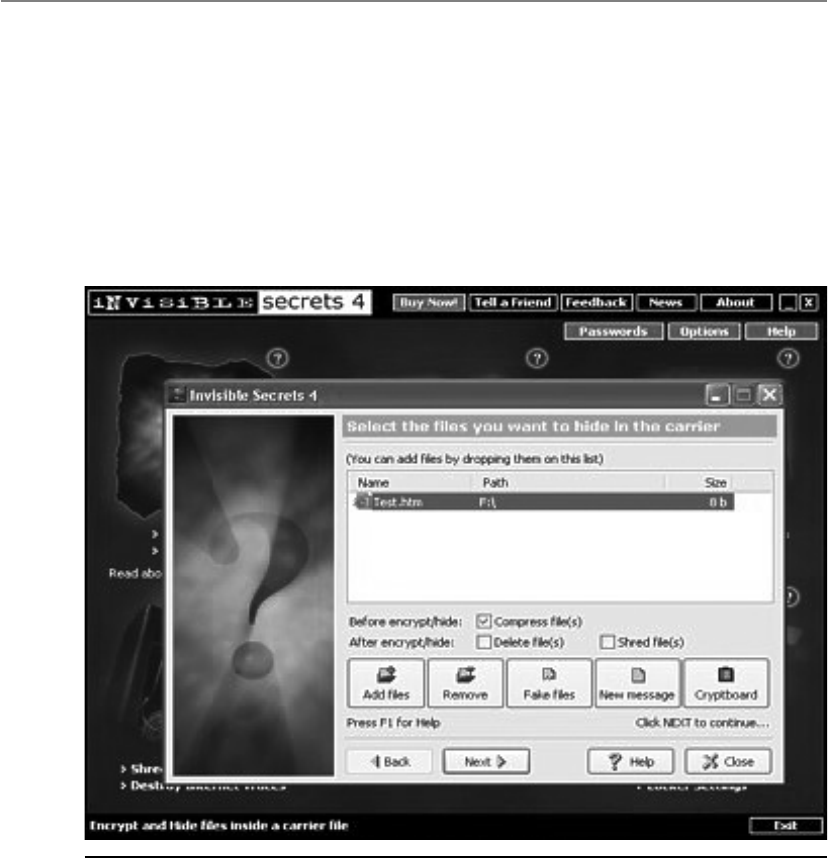

Steganography . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 774

Types of Ciphers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 777

Substitution Ciphers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 778

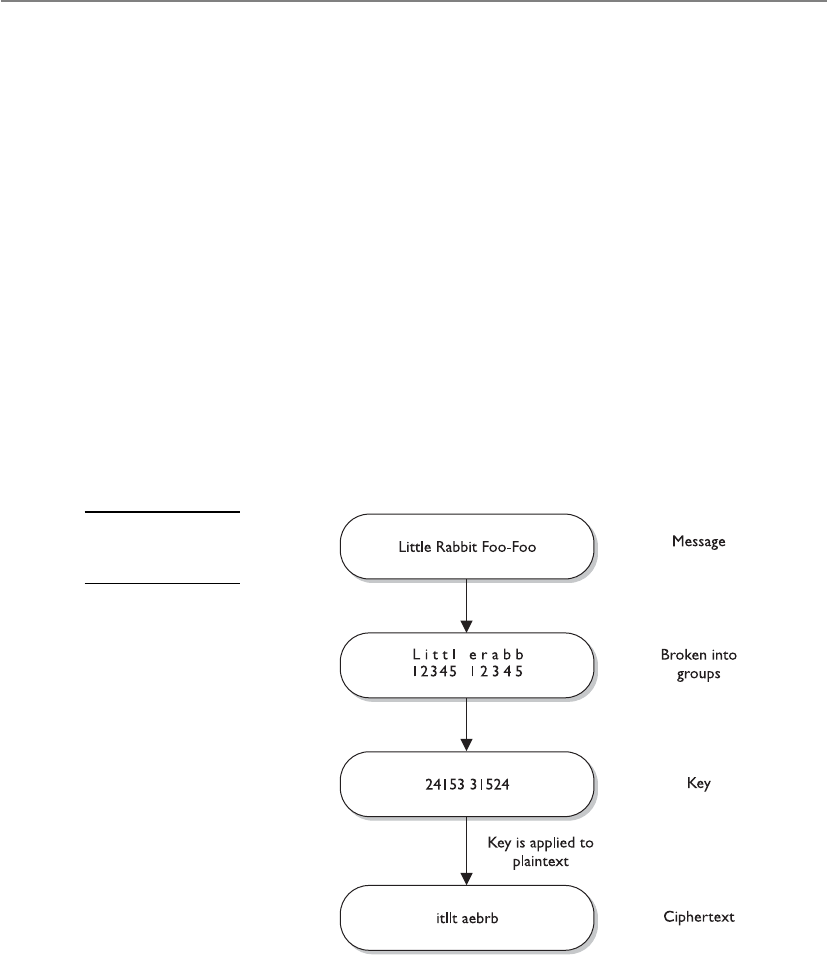

Transposition Ciphers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 778

Methods of Encryption . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 781

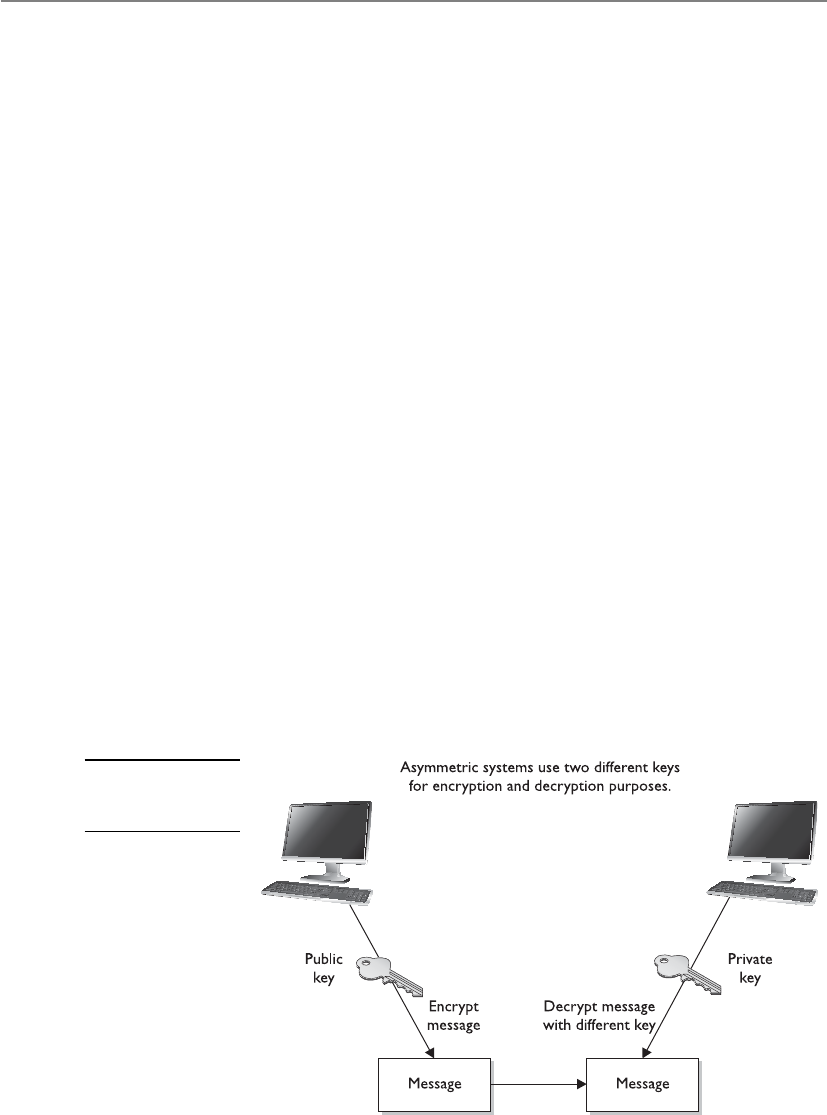

Symmetric vs. Asymmetric Algorithms . . . . . . . . . . . . . . . . . . 782

Symmetric Cryptography . . . . . . . . . . . . . . . . . . . . . . . . . . . . 782

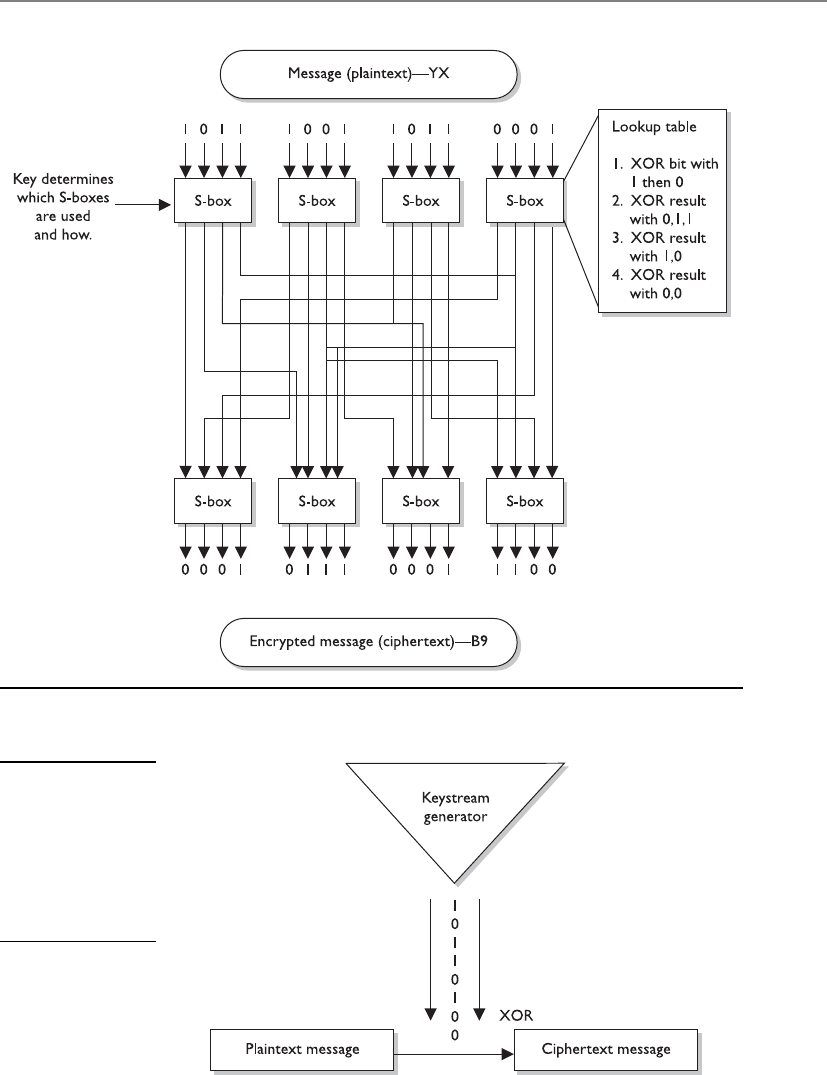

Block and Stream Ciphers . . . . . . . . . . . . . . . . . . . . . . . . . . . . 787

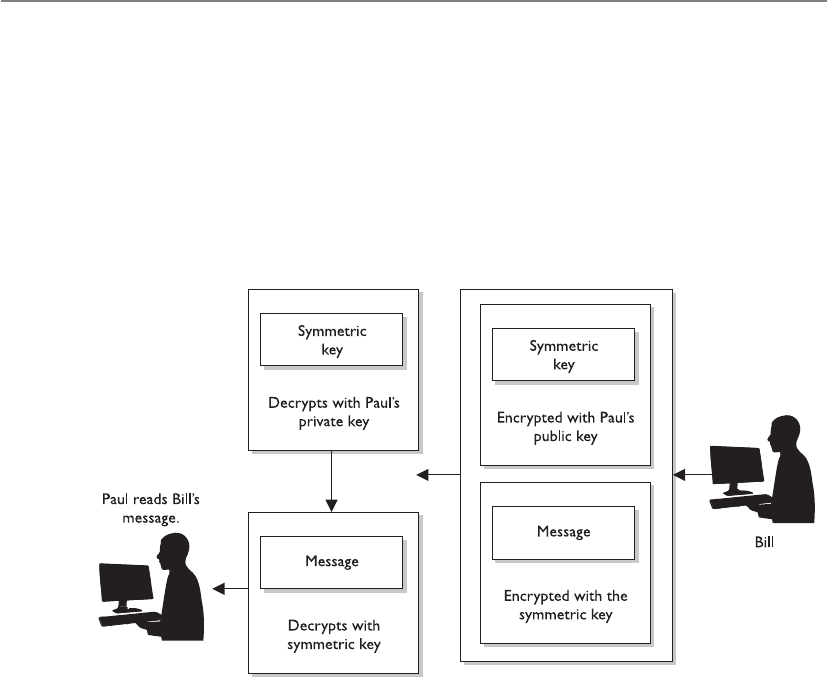

Hybrid Encryption Methods . . . . . . . . . . . . . . . . . . . . . . . . . . 792

Types of Symmetric Systems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 800

Data Encryption Standard . . . . . . . . . . . . . . . . . . . . . . . . . . . . 800

Triple-DES . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 808

The Advanced Encryption Standard . . . . . . . . . . . . . . . . . . . . 809

International Data Encryption Algorithm . . . . . . . . . . . . . . . 809

Blowfish . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 810

RC4 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 810

RC5 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 810

RC6 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 810

CISSP All-in-One Exam Guide

xiv

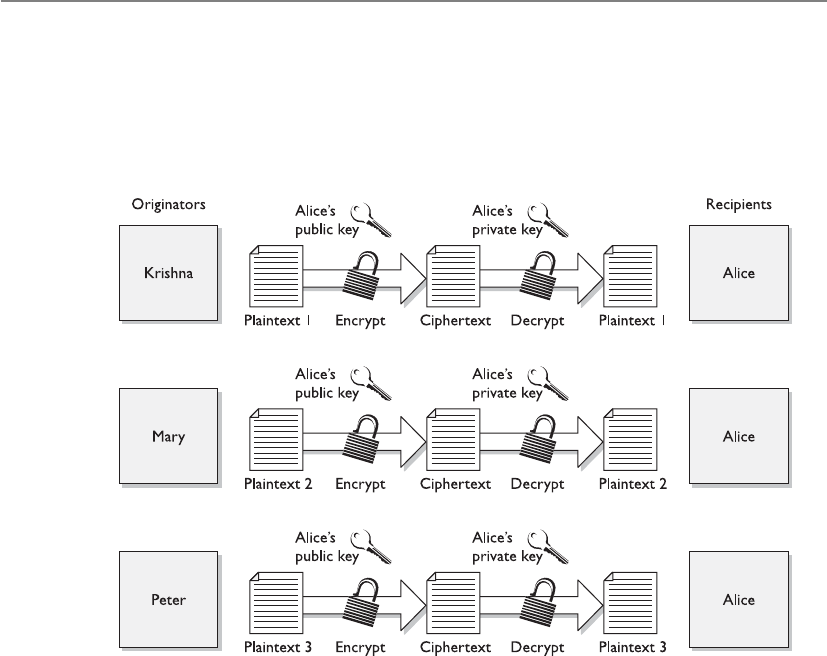

Types of Asymmetric Systems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 812

The Diffie-Hellman Algorithm . . . . . . . . . . . . . . . . . . . . . . . . 812

RSA . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 815

El Gamal . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 818

Elliptic Curve Cryptosystems . . . . . . . . . . . . . . . . . . . . . . . . . 818

Knapsack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 819

Zero Knowledge Proof . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 819

Message Integrity . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 820

The One-Way Hash . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 820

Various Hashing Algorithms . . . . . . . . . . . . . . . . . . . . . . . . . . 826

MD2 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 826

MD4 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 826

MD5 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 827

Attacks Against One-Way Hash Functions . . . . . . . . . . . . . . . 827

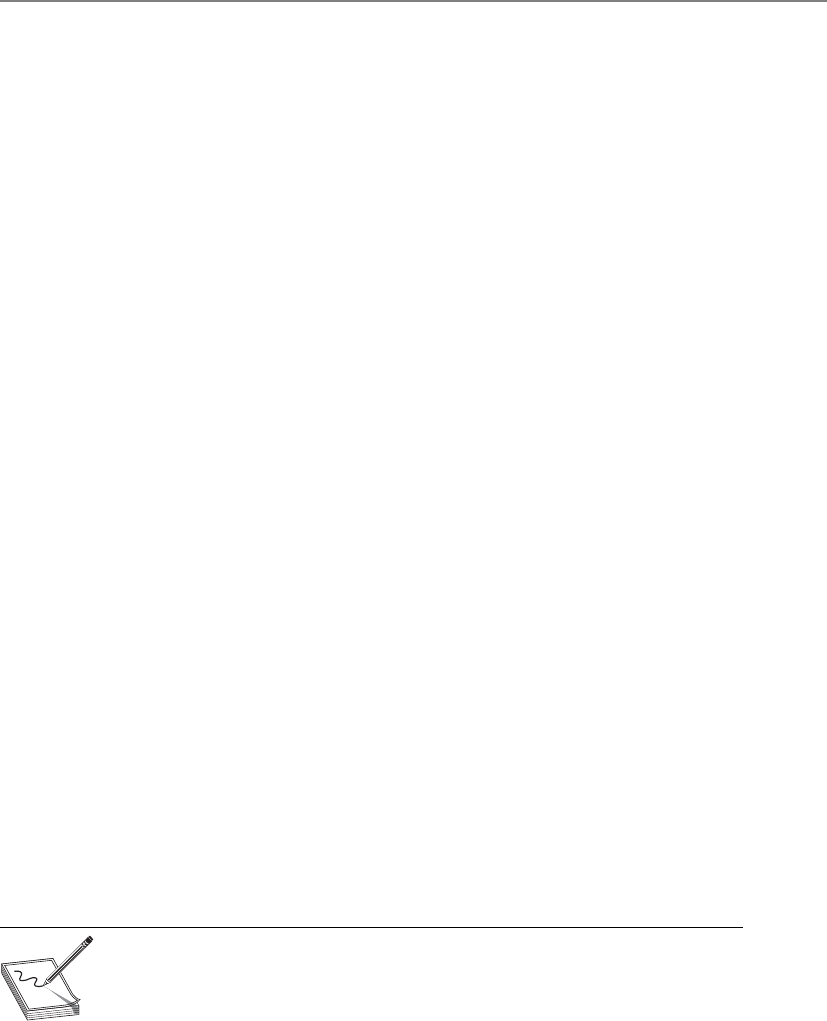

Digital Signatures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 829

Digital Signature Standard . . . . . . . . . . . . . . . . . . . . . . . . . . . 832

Public Key Infrastructure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 833

Certificate Authorities . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 834

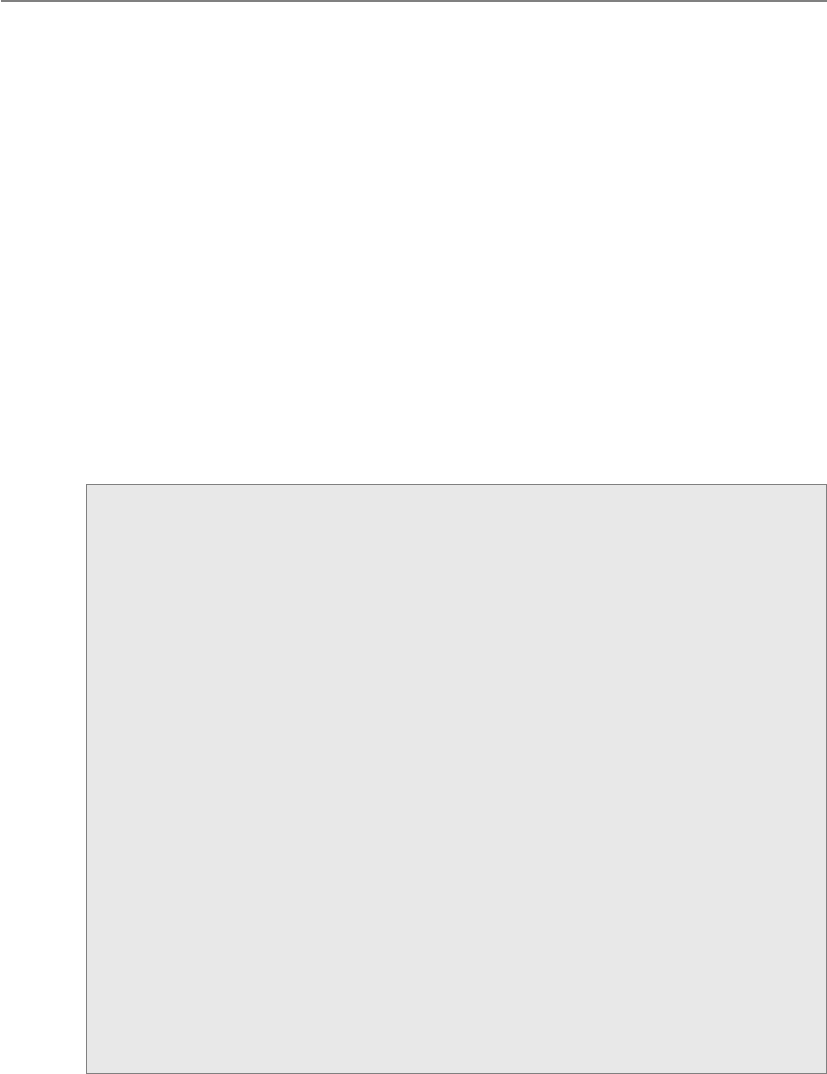

Certificates . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 837

The Registration Authority . . . . . . . . . . . . . . . . . . . . . . . . . . . 837

PKI Steps . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 838

Key Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 840

Key Management Principles . . . . . . . . . . . . . . . . . . . . . . . . . . 841

Rules for Keys and Key Management . . . . . . . . . . . . . . . . . . . 842

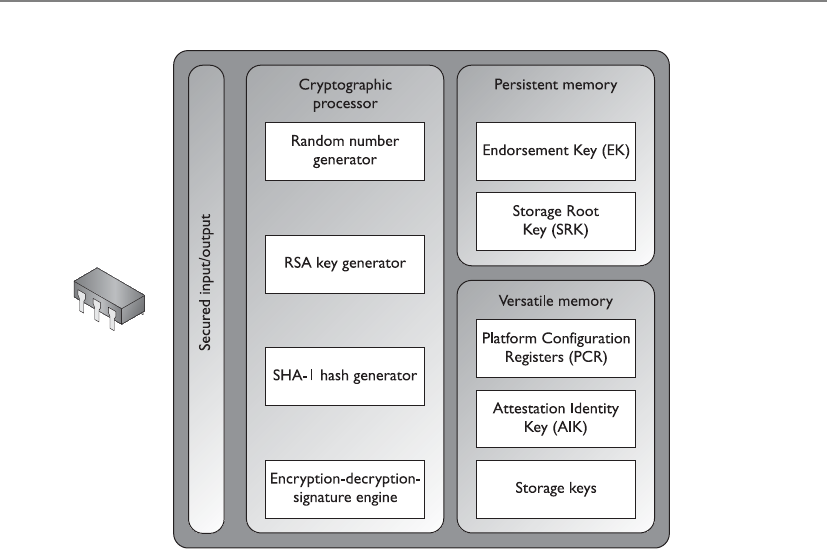

Trusted Platform Module . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 843

TPM Uses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 843

Link Encryption vs. End-to-End Encryption . . . . . . . . . . . . . . . . . . . 845

E-mail Standards . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 849

Multipurpose Internet Mail Extension . . . . . . . . . . . . . . . . . . 849

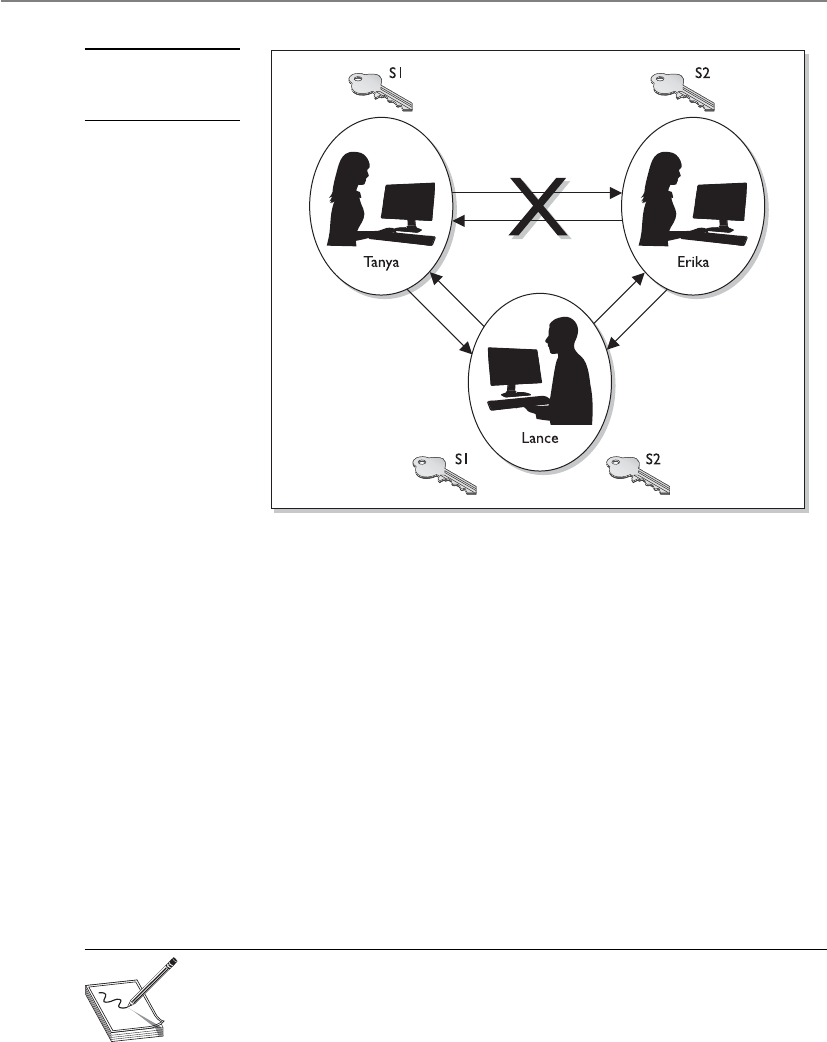

Pretty Good Privacy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 850

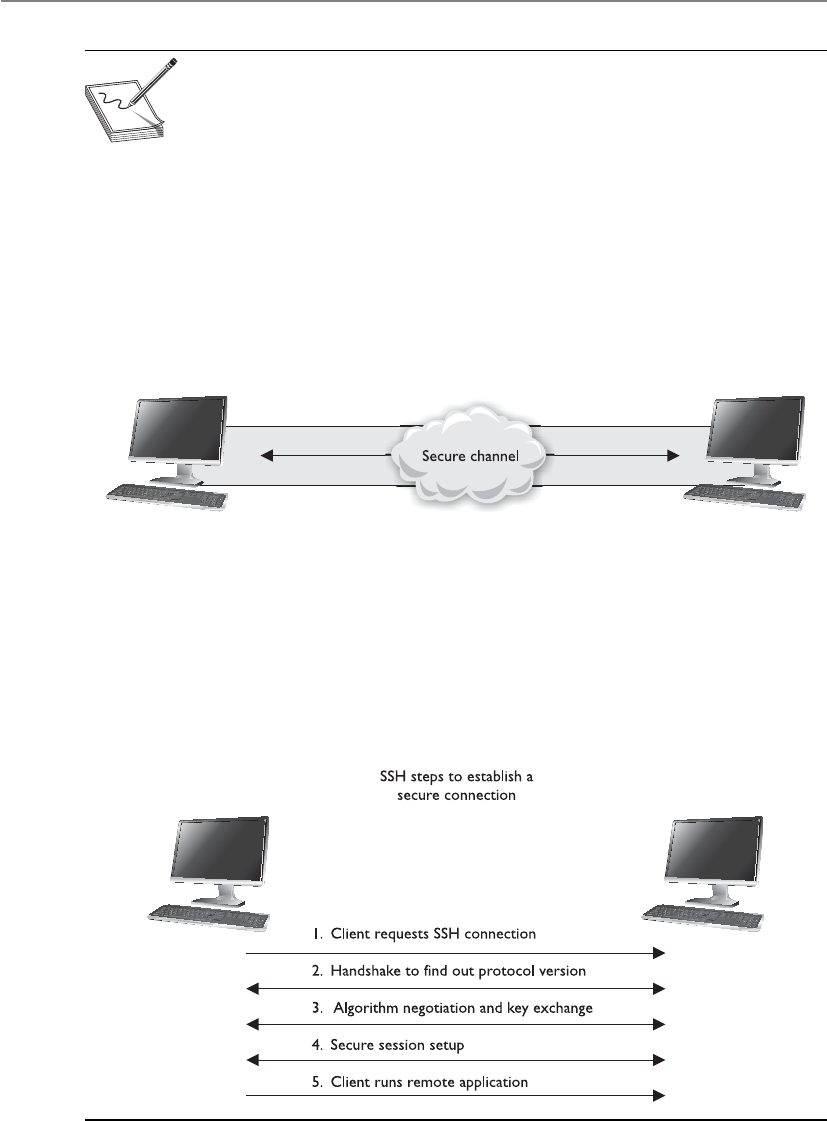

Internet Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 853

Start with the Basics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 854

Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 865

Ciphertext-Only Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 865

Known-Plaintext Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 865

Chosen-Plaintext Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . 866

Chosen-Ciphertext Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . 866

Differential Cryptanalysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . 866

Linear Cryptanalysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 867

Side-Channel Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 867

Replay Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 868

Algebraic Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 868

Analytic Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 868

Statistical Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 869

Social Engineering Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . 869

Meet-in-the-Middle Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . 869

Contents

xv

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 870

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 871

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 874

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 880

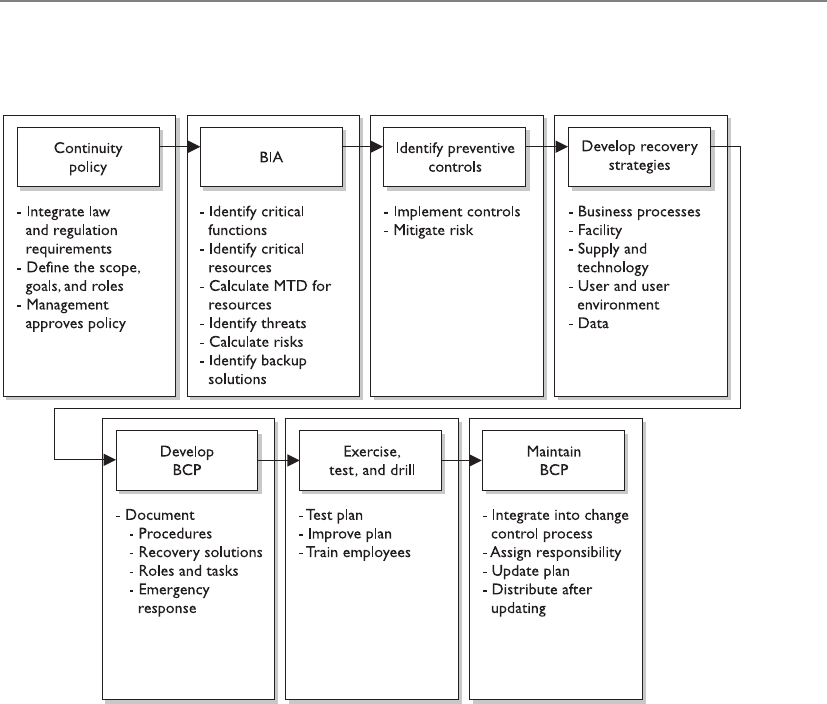

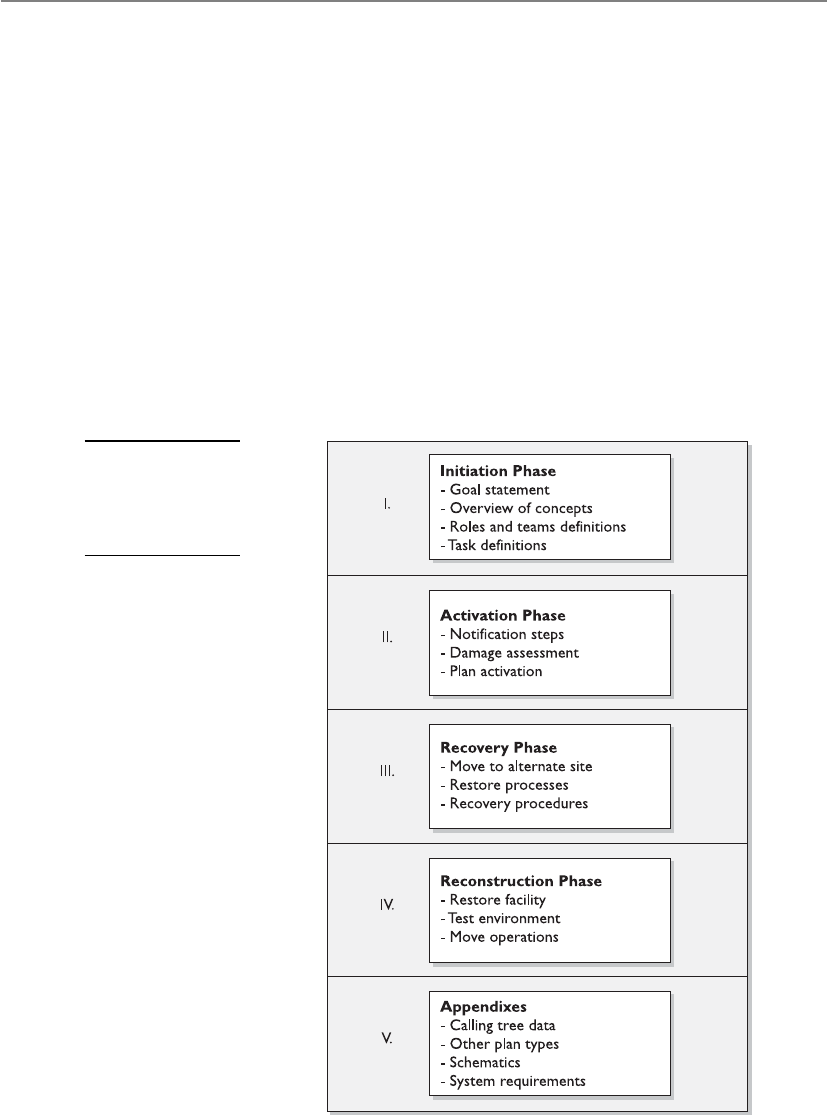

Chapter 8 Business Continuity and Disaster Recovery Planning . . . . . . . . . . . 885

Business Continuity and Disaster Recovery . . . . . . . . . . . . . . . . . . . 887

Standards and Best Practices . . . . . . . . . . . . . . . . . . . . . . . . . 890

Making BCM Part of the Enterprise Security Program . . . . . . 893

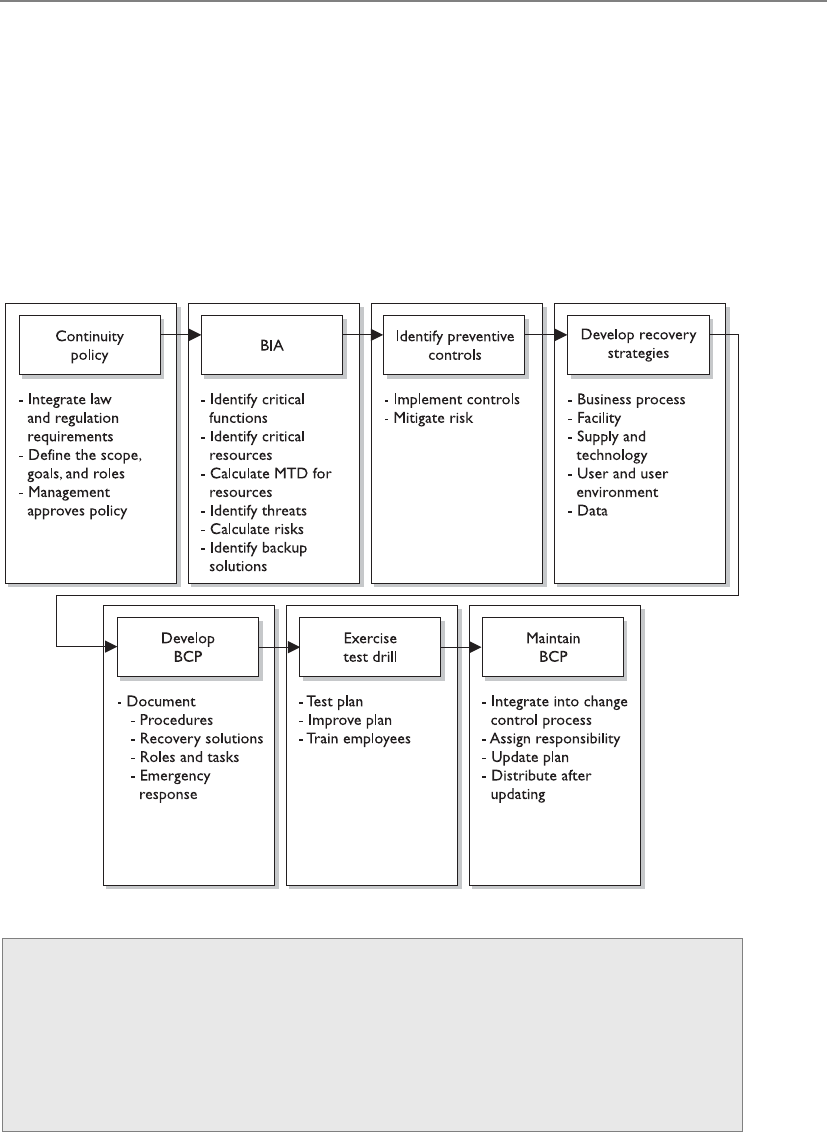

BCP Project Components . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 897

Scope of the Project . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 899

BCP Policy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 901

Project Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 901

Business Continuity Planning Requirements . . . . . . . . . . . . . 904

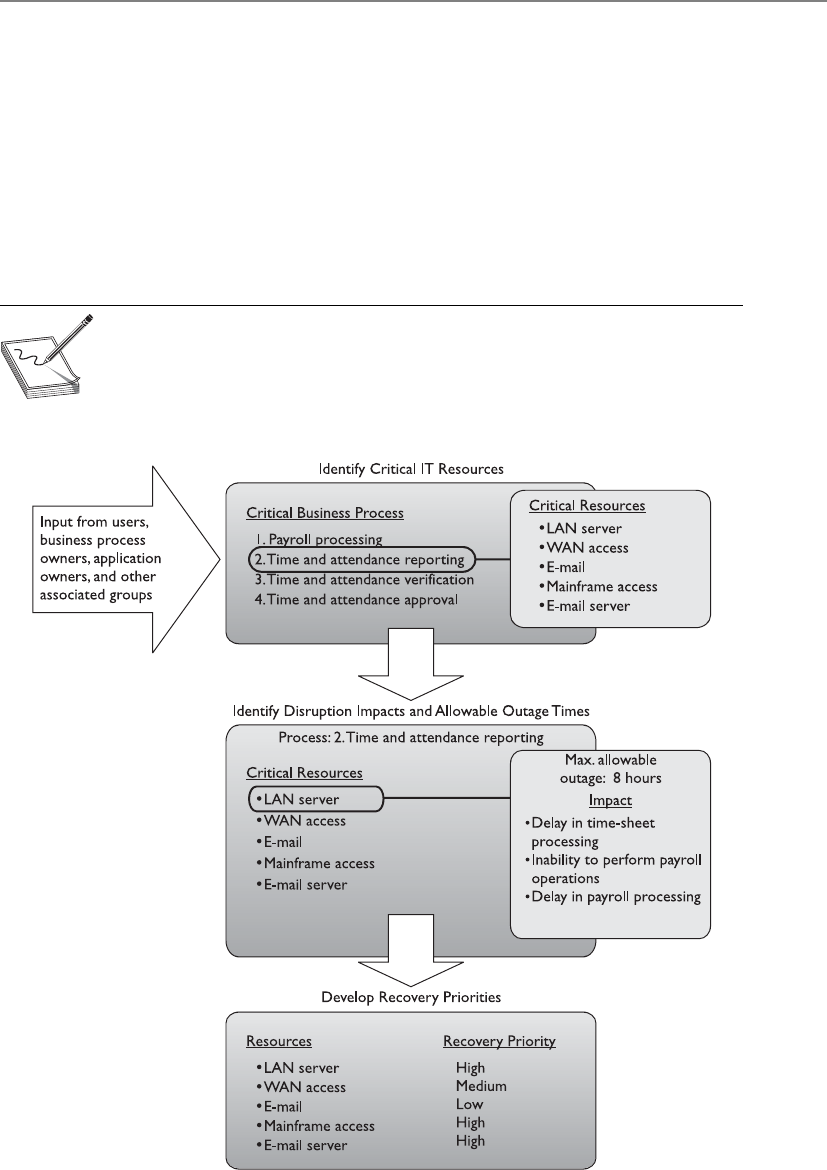

Business Impact Analysis (BIA) . . . . . . . . . . . . . . . . . . . . . . . 905

Interdependencies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 91 2

Preventive Measures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 913

Recovery Strategies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 914

Business Process Recovery . . . . . . . . . . . . . . . . . . . . . . . . . . . 918

Facility Recovery . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 919

Supply and Technology Recovery . . . . . . . . . . . . . . . . . . . . . . 926

Choosing a Software Backup Facility . . . . . . . . . . . . . . . . . . . 930

End-User Environment . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 933

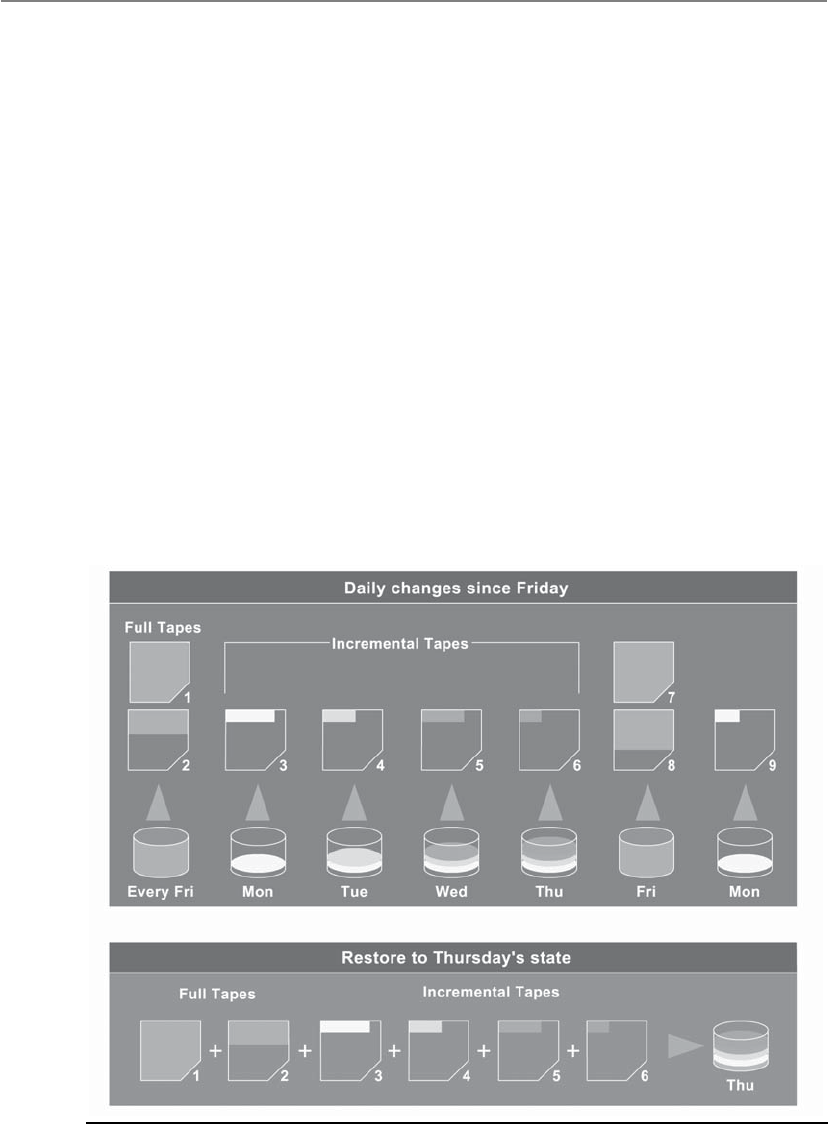

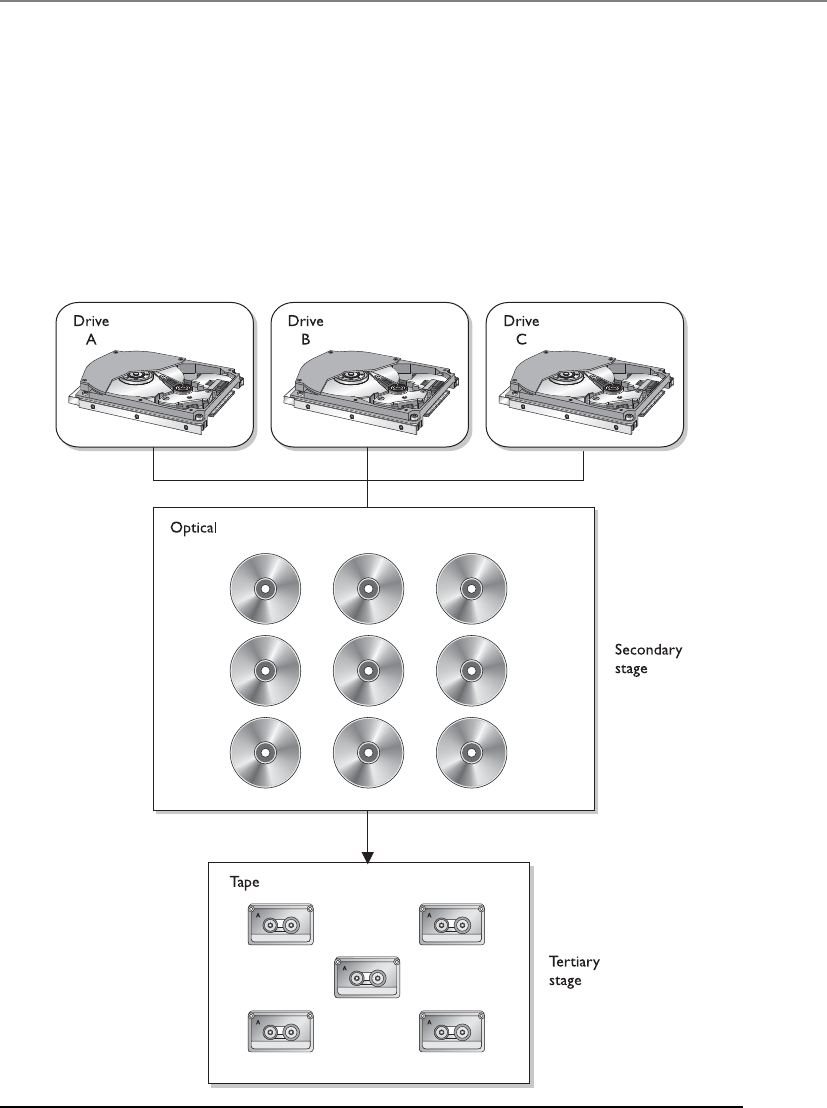

Data Backup Alternatives . . . . . . . . . . . . . . . . . . . . . . . . . . . . 934

Electronic Backup Solutions . . . . . . . . . . . . . . . . . . . . . . . . . . 938

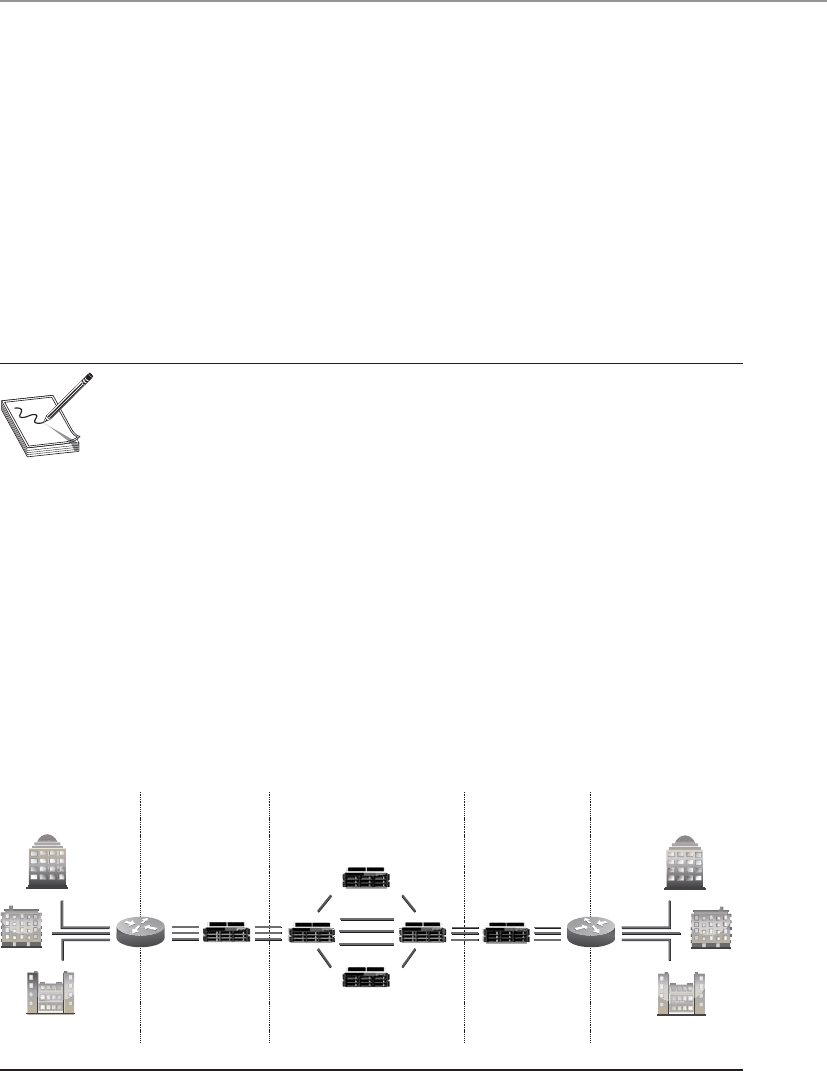

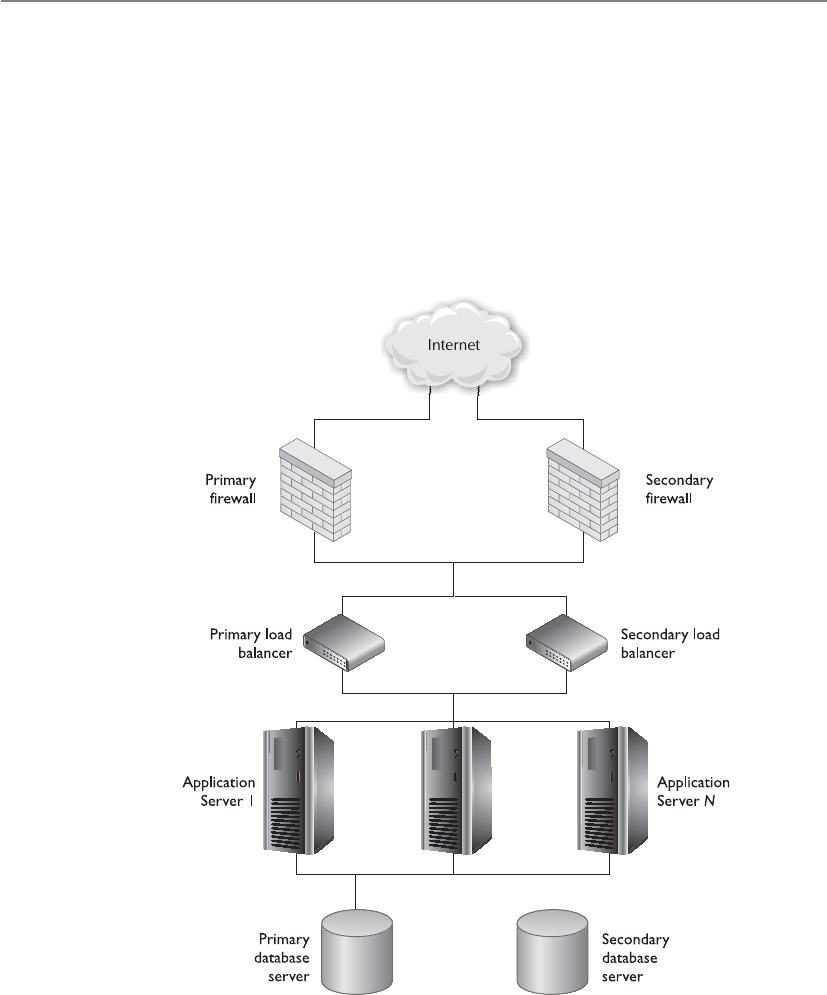

High Availability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 941

Insurance . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 944

Recovery and Restoration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 945

Developing Goals for the Plans . . . . . . . . . . . . . . . . . . . . . . . 949

Implementing Strategies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 951

Testing and Revising the Plan . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 953

Checklist Test . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 955

Structured Walk-Through Test . . . . . . . . . . . . . . . . . . . . . . . . 955

Simulation Test . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 955

Parallel Test . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 955

Full-Interruption Test . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 956

Other Types of Training . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 956

Emergency Response . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 956

Maintaining the Plan . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 958

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 961

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 961

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 964

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 972

Chapter 9 Legal, Regulations, Investigations, and Compliance . . . . . . . . . . . . . 979

The Many Facets of Cyberlaw . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 980

The Crux of Computer Crime Laws . . . . . . . . . . . . . . . . . . . . . . . . . 981

CISSP All-in-One Exam Guide

xvi

Complexities in Cybercrime . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 983

Electronic Assets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 985

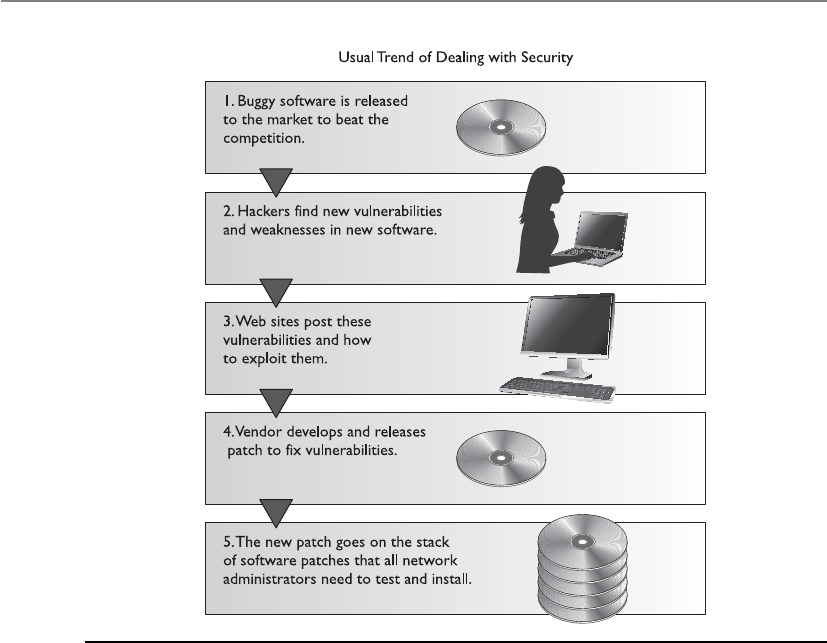

The Evolution of Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 986

International Issues . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 990

Types of Legal Systems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 994

Intellectual Property Laws . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 998

Trade Secret . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 999

Copyright . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1000

Trademark . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1001

Patent . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1001

Internal Protection of Intellectual Property . . . . . . . . . . . . . . 1003

Software Piracy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1004

Privacy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1006

The Increasing Need for Privacy Laws . . . . . . . . . . . . . . . . . . . 1008

Laws, Directives, and Regulations . . . . . . . . . . . . . . . . . . . . . . 1009

Liability and Its Ramifications . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1022

Personal Information . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1027

Hacker Intrusion . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1027

Third-Party Risk . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1028

Contractual Agreements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1029

Procurement and Vendor Processes . . . . . . . . . . . . . . . . . . . . 1029

Compliance . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1030

Investigations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1032

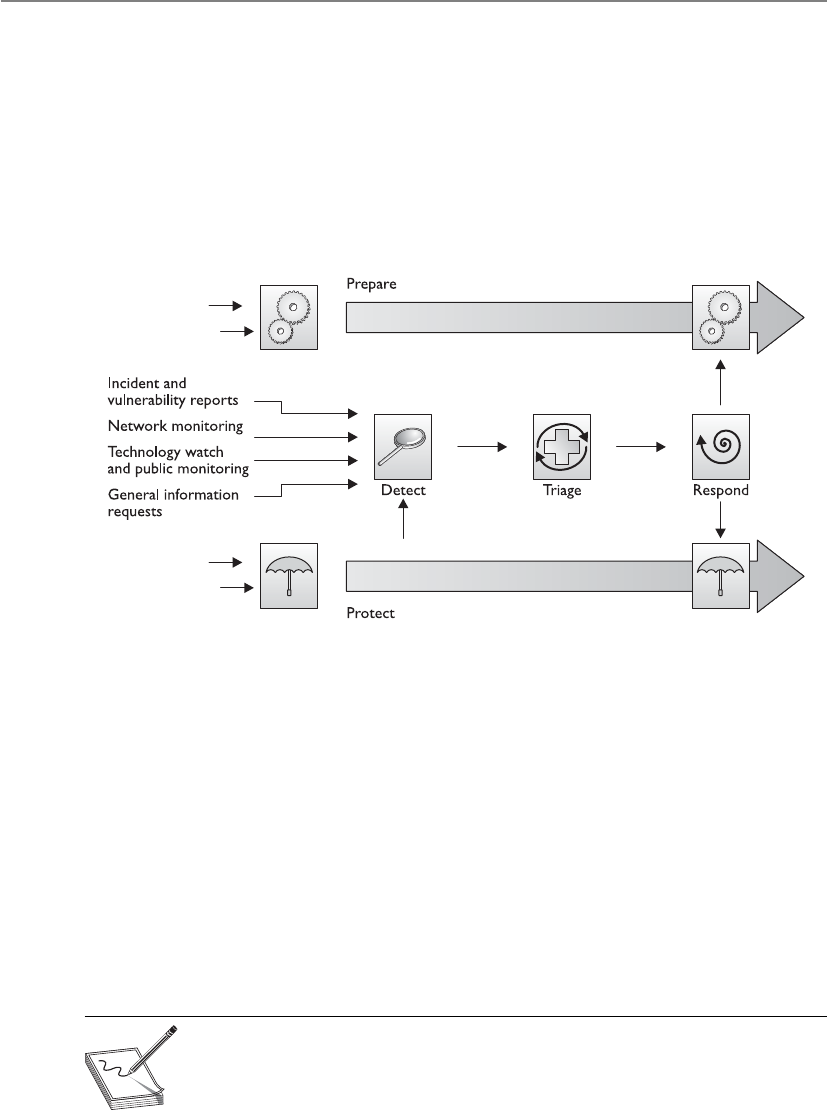

Incident Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1033

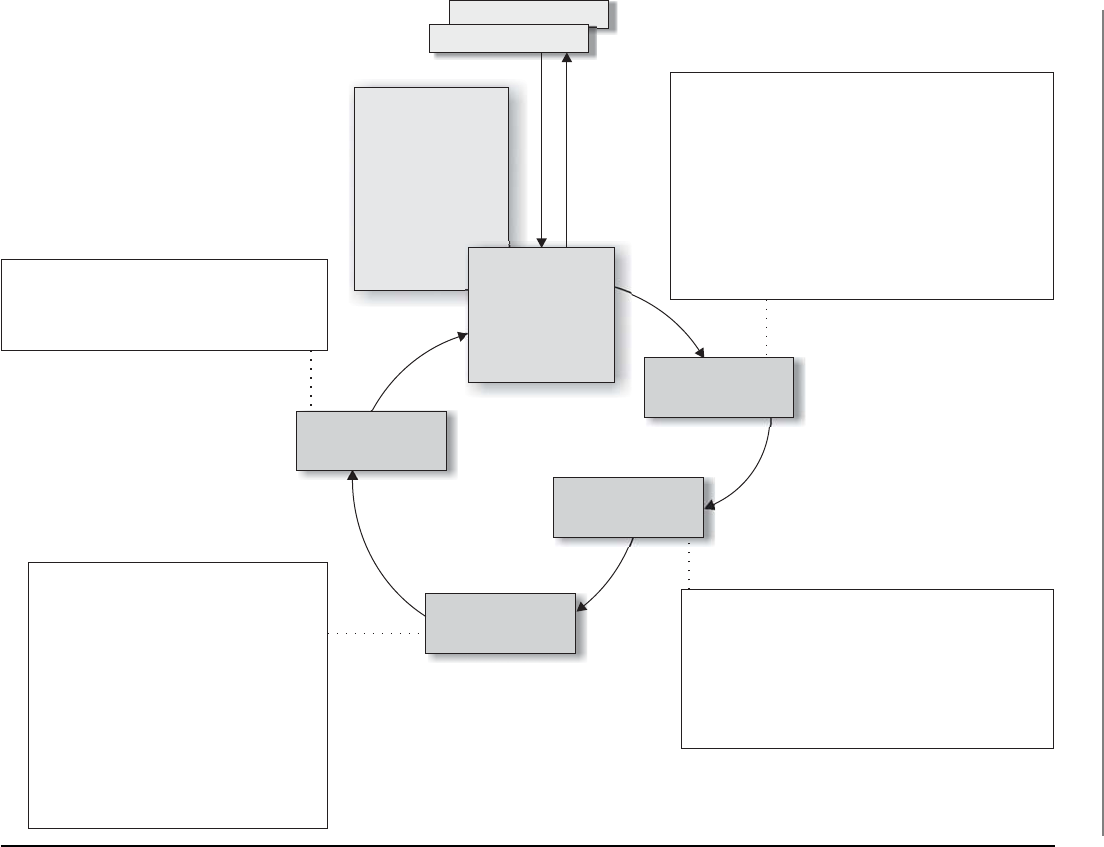

Incident Response Procedures . . . . . . . . . . . . . . . . . . . . . . . . 1037

Computer Forensics and Proper Collection of Evidence . . . . 1042

International Organization on Computer Evidence . . . . . . . . 1043

Motive, Opportunity, and Means . . . . . . . . . . . . . . . . . . . . . . 1044

Computer Criminal Behavior . . . . . . . . . . . . . . . . . . . . . . . . . 1044

Incident Investigators . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1045

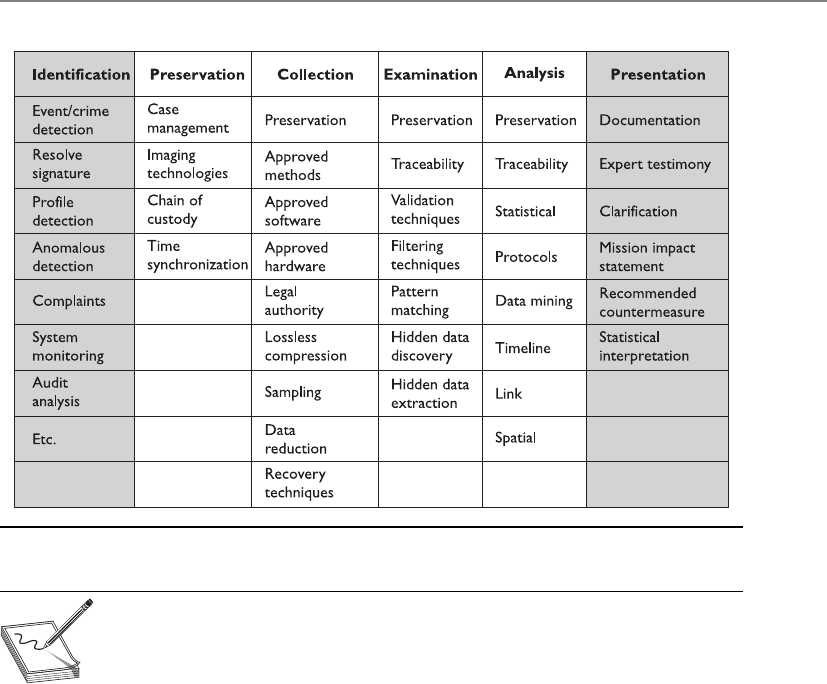

The Forensics Investigation Process . . . . . . . . . . . . . . . . . . . . 1046

What Is Admissible in Court? . . . . . . . . . . . . . . . . . . . . . . . . . 1053

Surveillance, Search, and Seizure . . . . . . . . . . . . . . . . . . . . . . 1057

Interviewing and Interrogating . . . . . . . . . . . . . . . . . . . . . . . . 1058

A Few Different Attack Types . . . . . . . . . . . . . . . . . . . . . . . . . 1058

Cybersquatting . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1061

Ethics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1061

The Computer Ethics Institute . . . . . . . . . . . . . . . . . . . . . . . . 1062

The Internet Architecture Board . . . . . . . . . . . . . . . . . . . . . . . 1063

Corporate Ethics Programs . . . . . . . . . . . . . . . . . . . . . . . . . . . 1064

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1065

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1065

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1069

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1076

Contents

xvii

Chapter 10 Software Development Security . . . . . . . . . . . . . . . . . . . . . . . . . . . 1081

Software’s Importance . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1081

Where Do We Place Security? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1082

Different Environments Demand Different Security . . . . . . . 1083

Environment versus Application . . . . . . . . . . . . . . . . . . . . . . . 1084

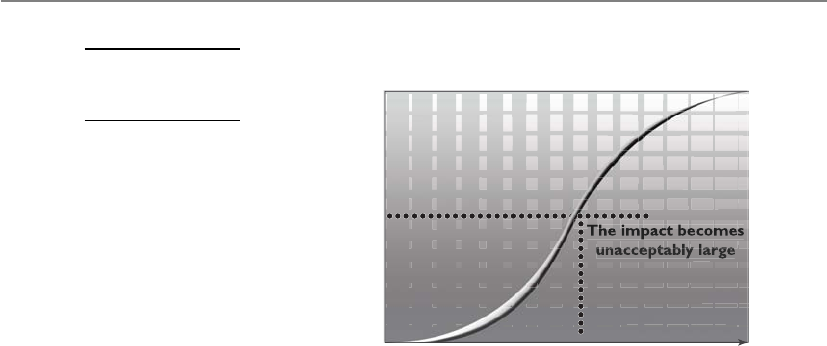

Functionality versus Security . . . . . . . . . . . . . . . . . . . . . . . . . 1085

Implementation and Default Issues . . . . . . . . . . . . . . . . . . . . 1086

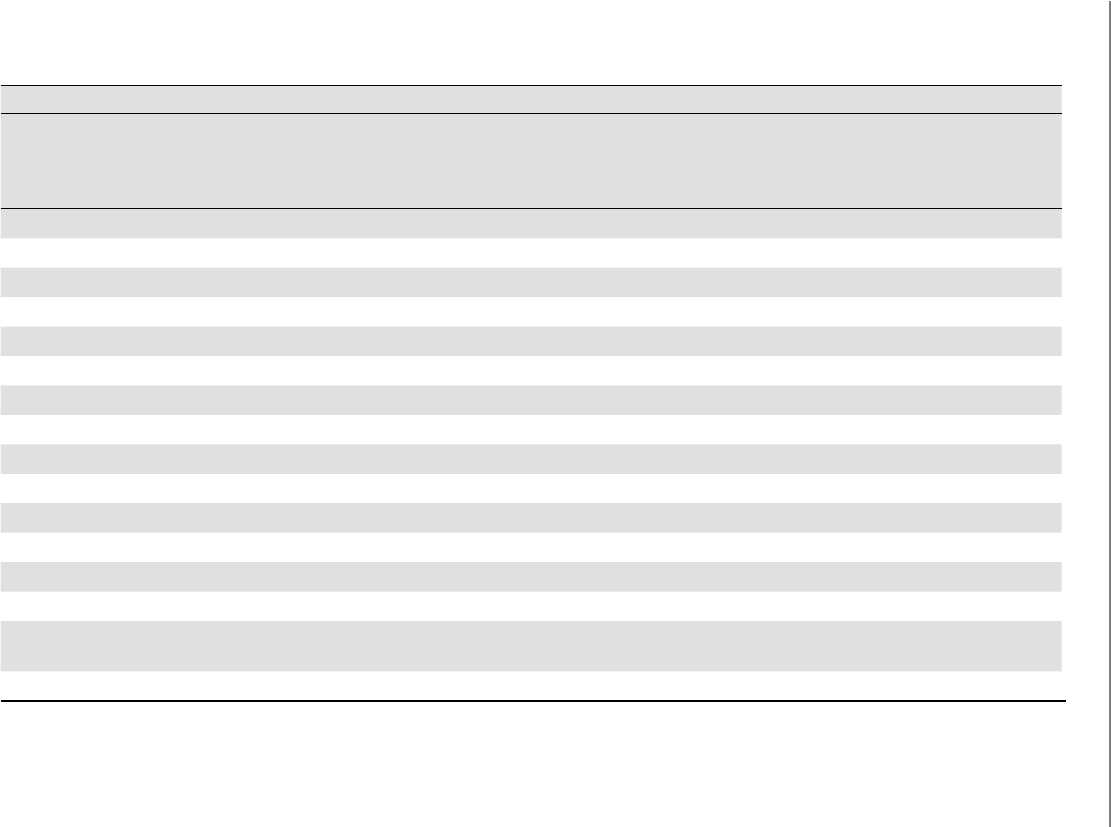

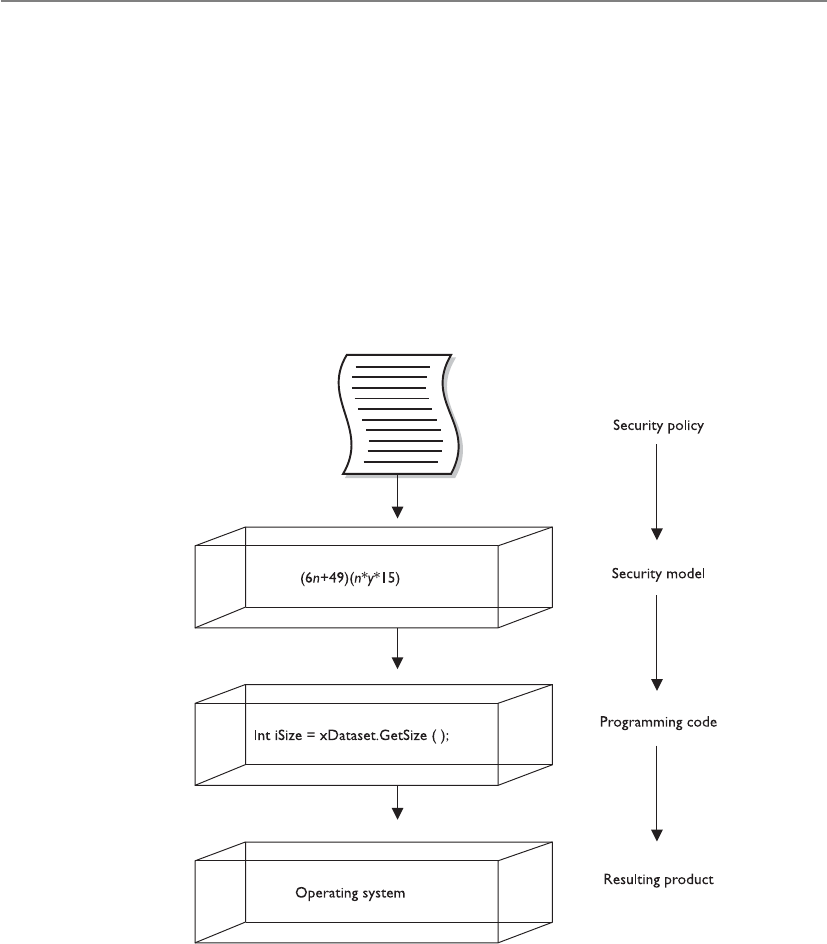

System Development Life Cycle . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1087

Initiation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1089

Acquisition/Development . . . . . . . . . . . . . . . . . . . . . . . . . . . 1091

Implementation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1092

Operations/Maintenance . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1092

Disposal . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1093

Software Development Life Cycle . . . . . . . . . . . . . . . . . . . . . . . . . . . 1095

Project Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1096

Requirements Gathering Phase . . . . . . . . . . . . . . . . . . . . . . . . 1096

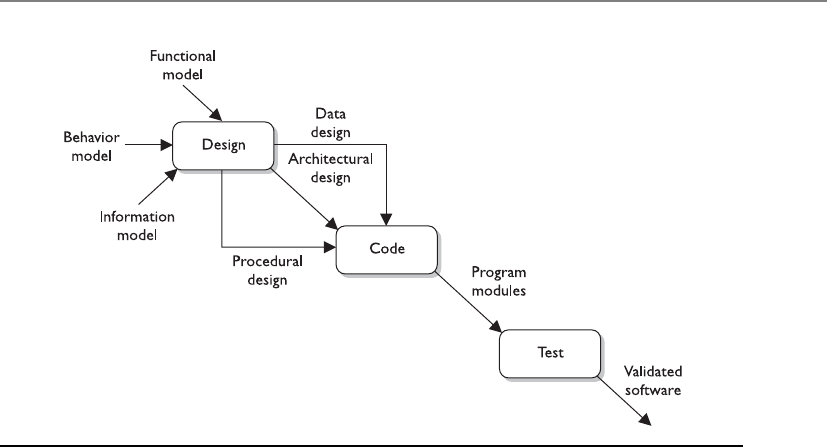

Design Phase . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1098

Development Phase . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110 2

Testing/Validation Phase . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110 4

Release/Maintenance Phase . . . . . . . . . . . . . . . . . . . . . . . . . . 110 6

Secure Software Development Best Practices . . . . . . . . . . . . . . . . . . 110 8

Software Development Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1111

Build and Fix Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1111

Waterfall Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 111 2

V-Shaped Model (V-Model) . . . . . . . . . . . . . . . . . . . . . . . . . . . 111 2

Prototyping . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 111 3

Incremental Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 111 4

Spiral Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 111 5

Rapid Application Development . . . . . . . . . . . . . . . . . . . . . . . 111 6

Agile Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 111 8

Capability Maturity Model Integration . . . . . . . . . . . . . . . . . . . . . . 11 2 0

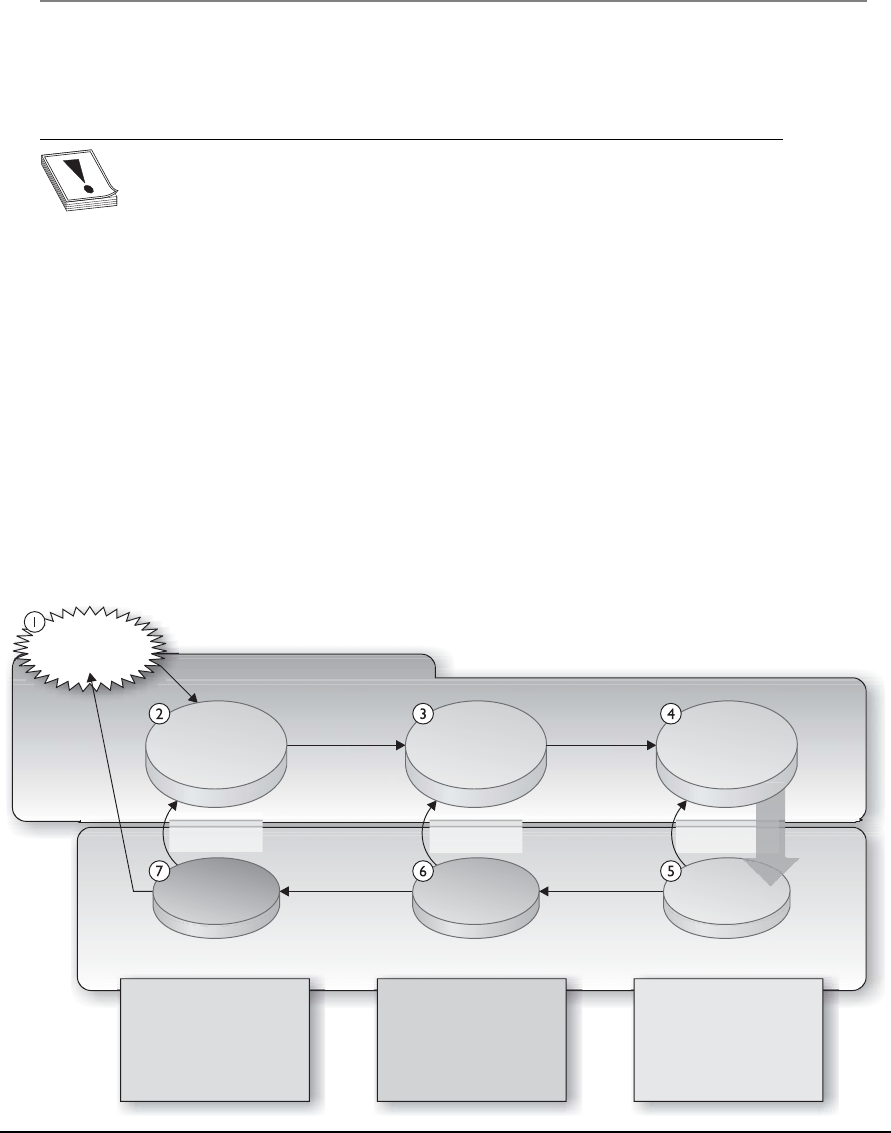

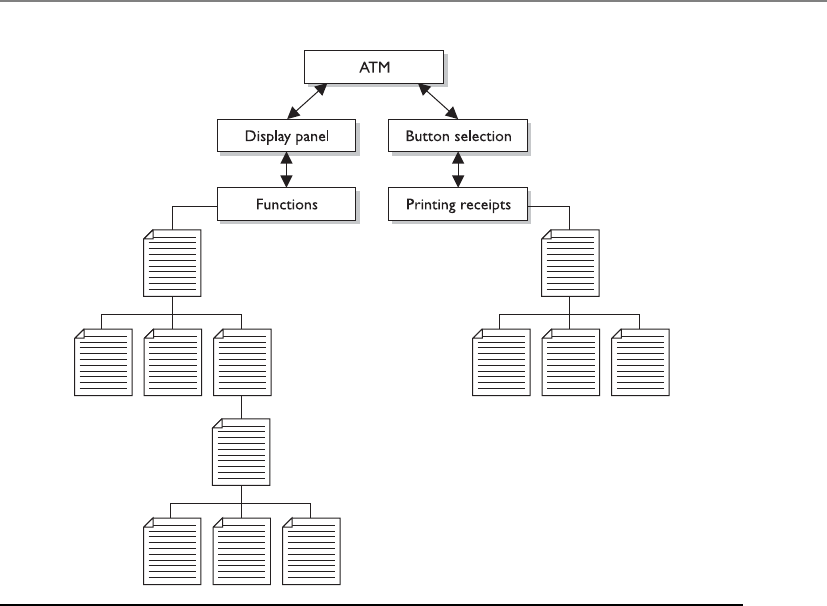

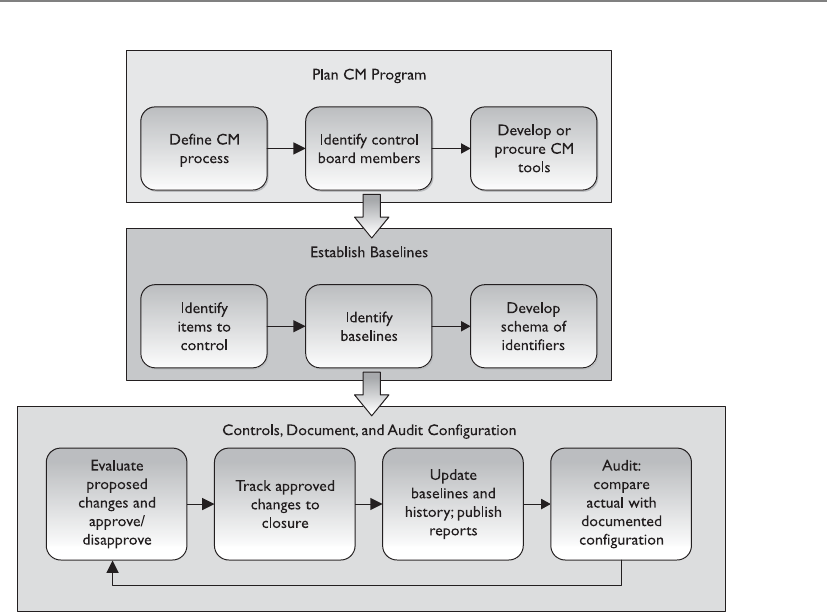

Change Control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1122

Software Configuration Management . . . . . . . . . . . . . . . . . . . 1124

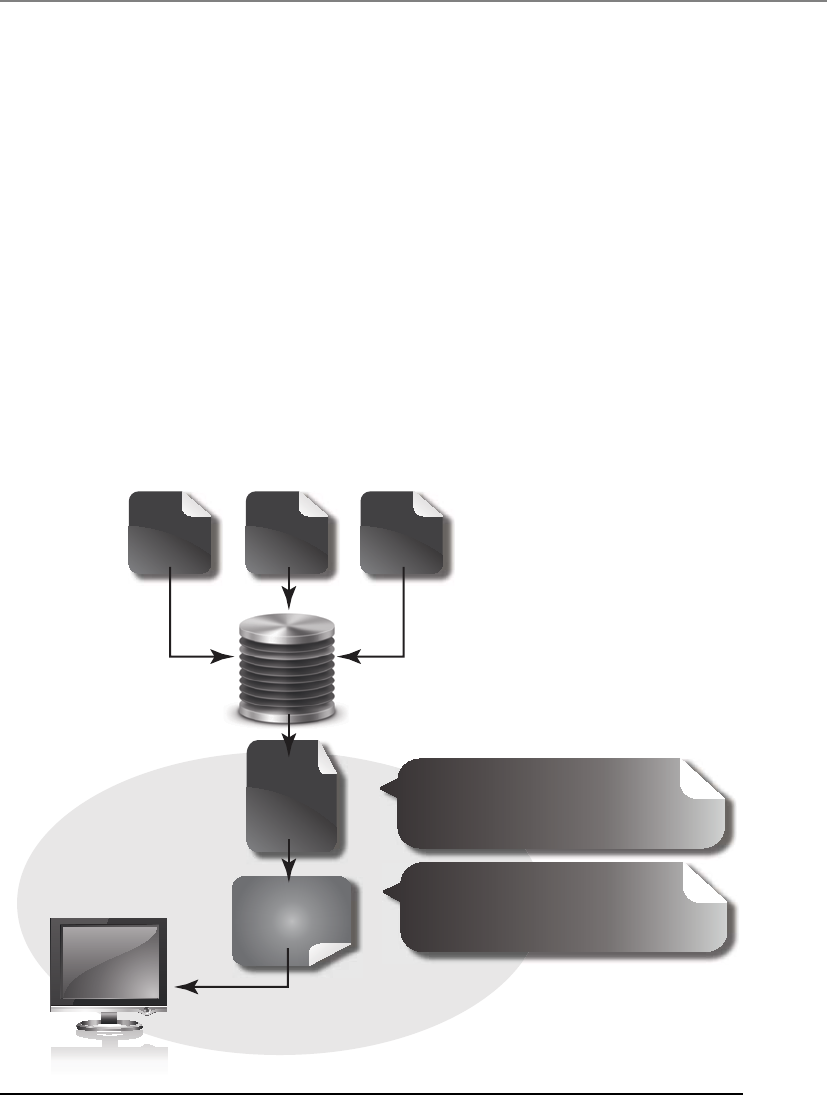

Programming Languages and Concepts . . . . . . . . . . . . . . . . . . . . . . 1125

Assemblers, Compilers, Interpreters . . . . . . . . . . . . . . . . . . . . 1128

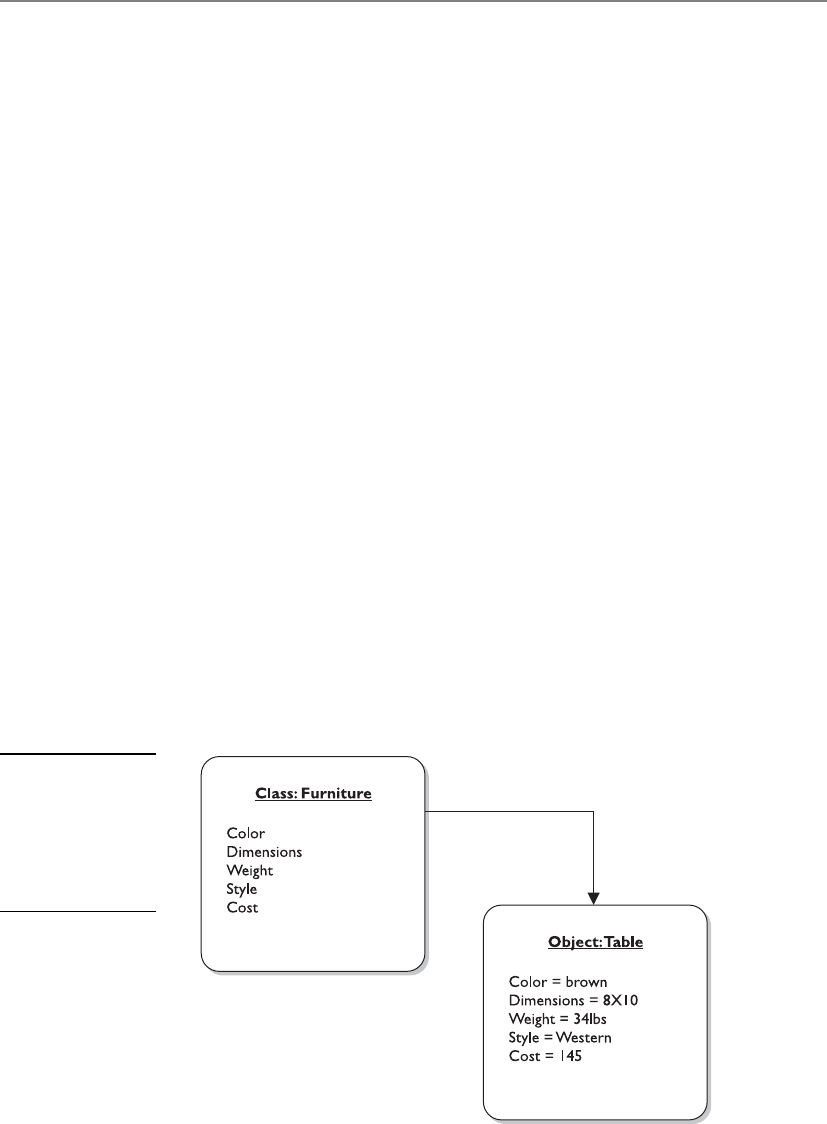

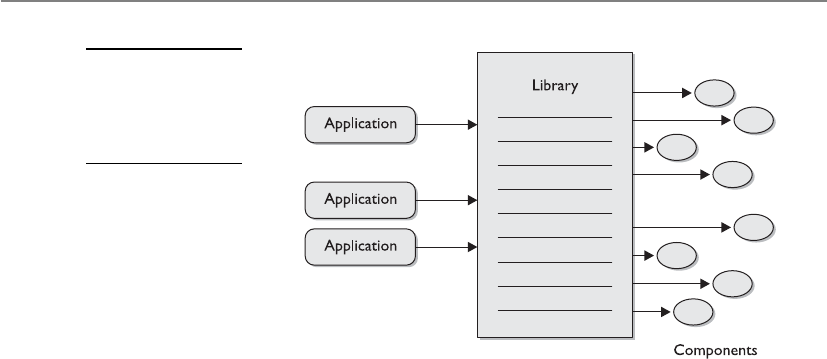

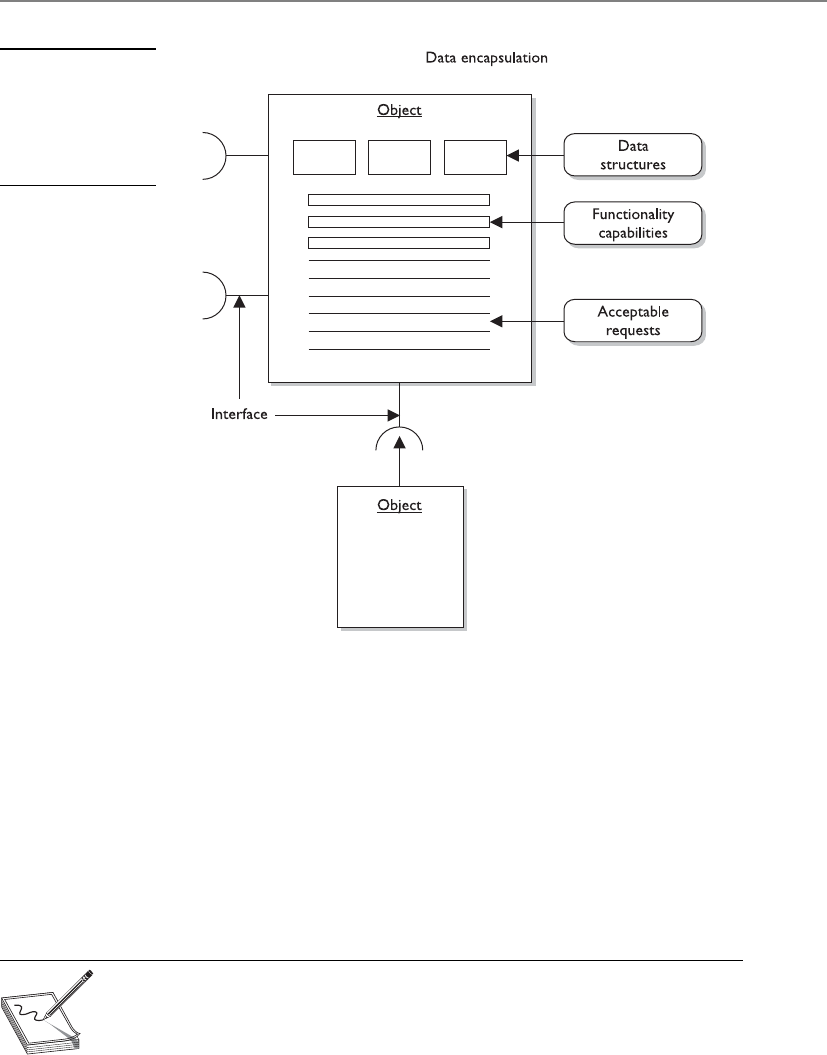

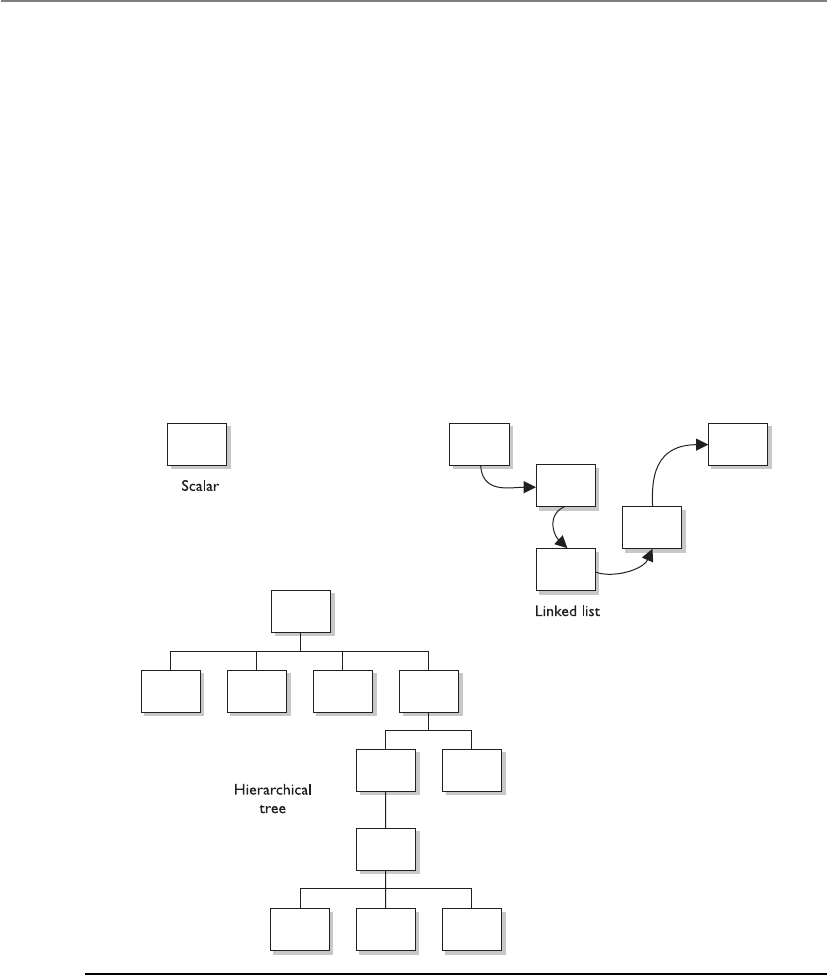

Object-Oriented Concepts . . . . . . . . . . . . . . . . . . . . . . . . . . . 11 3 0

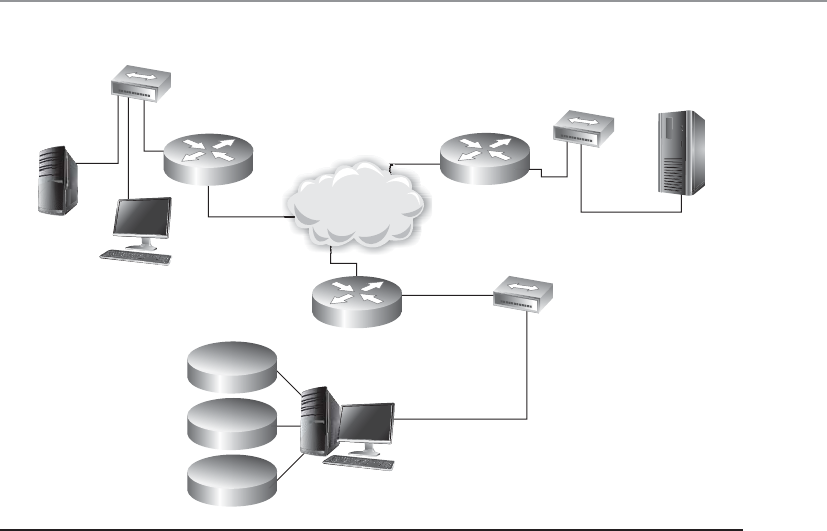

Distributed Computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1142

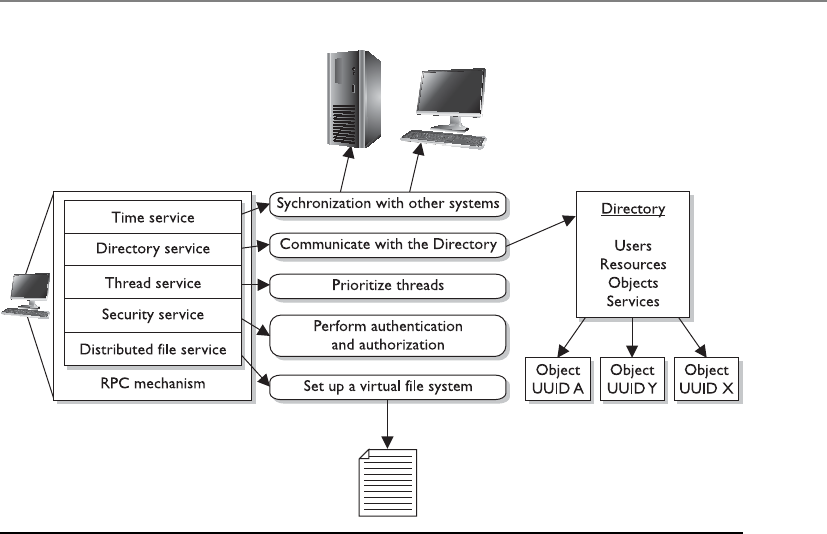

Distributed Computing Environment . . . . . . . . . . . . . . . . . . 1142

CORBA and ORBs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1143

COM and DCOM . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1146

Java Platform, Enterprise Edition . . . . . . . . . . . . . . . . . . . . . . 1148

Service-Oriented Architecture . . . . . . . . . . . . . . . . . . . . . . . . . 1148

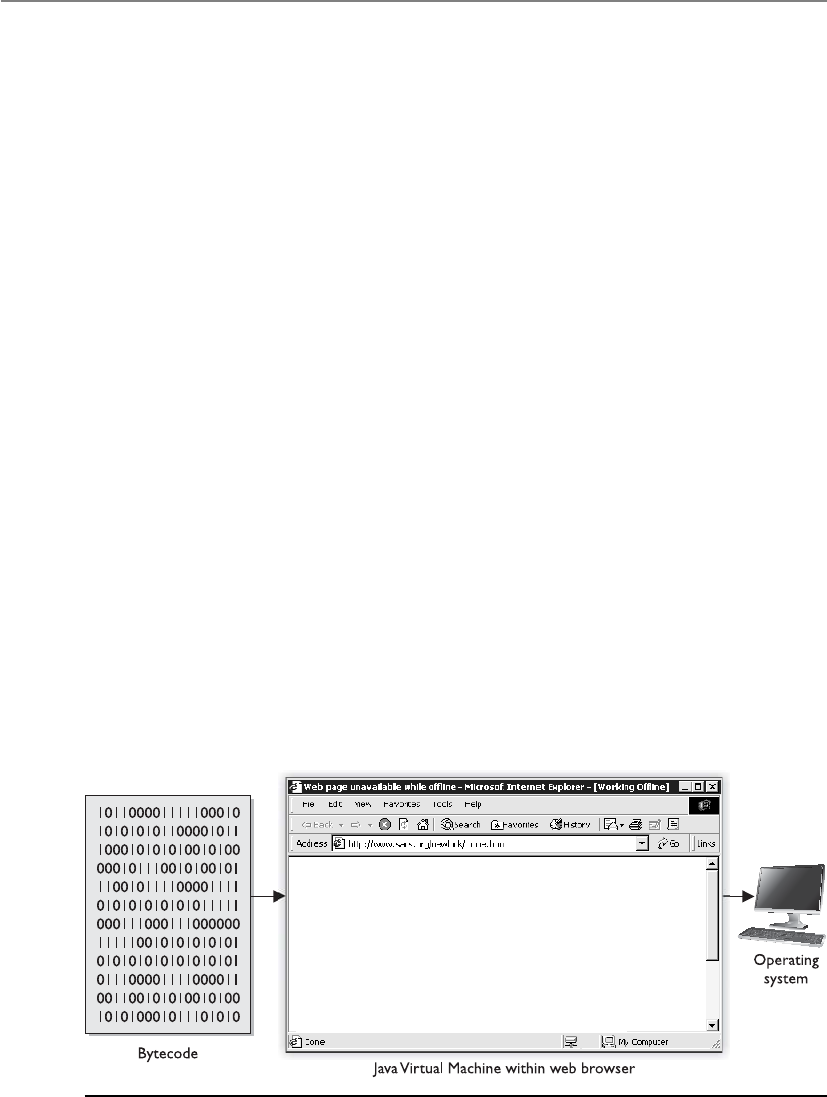

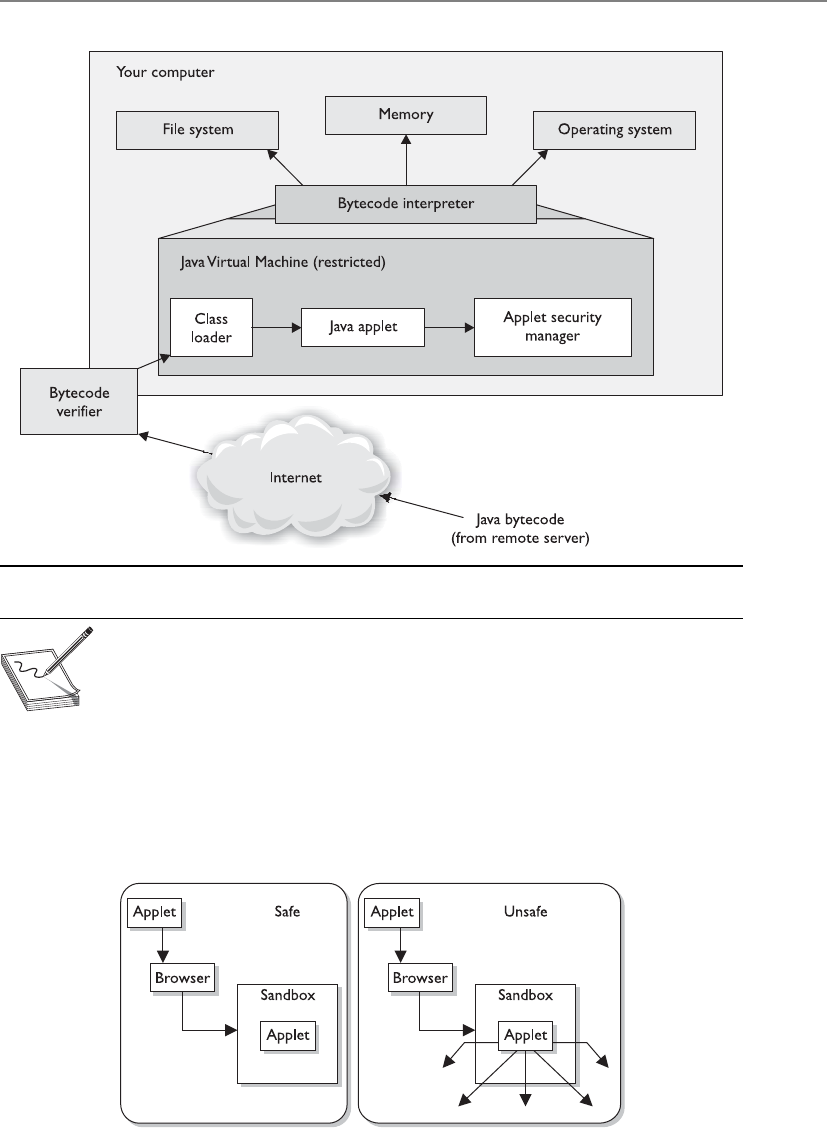

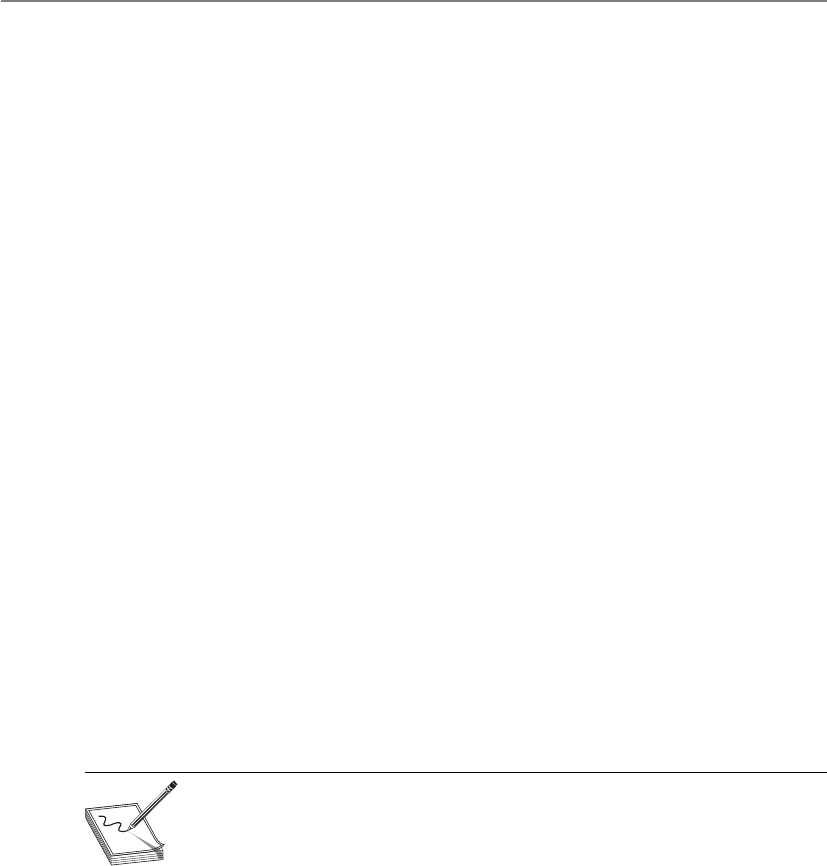

Mobile Code . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1153

Java Applets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1154

ActiveX Controls . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1156

CISSP All-in-One Exam Guide

xviii

Web Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1157

Specific Threats for Web Environments . . . . . . . . . . . . . . . . . 1158

Web Application Security Principles . . . . . . . . . . . . . . . . . . . . 1167

Database Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1168

Database Management Software . . . . . . . . . . . . . . . . . . . . . . . 11 70

Database Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11 70

Database Programming Interfaces . . . . . . . . . . . . . . . . . . . . . 1176

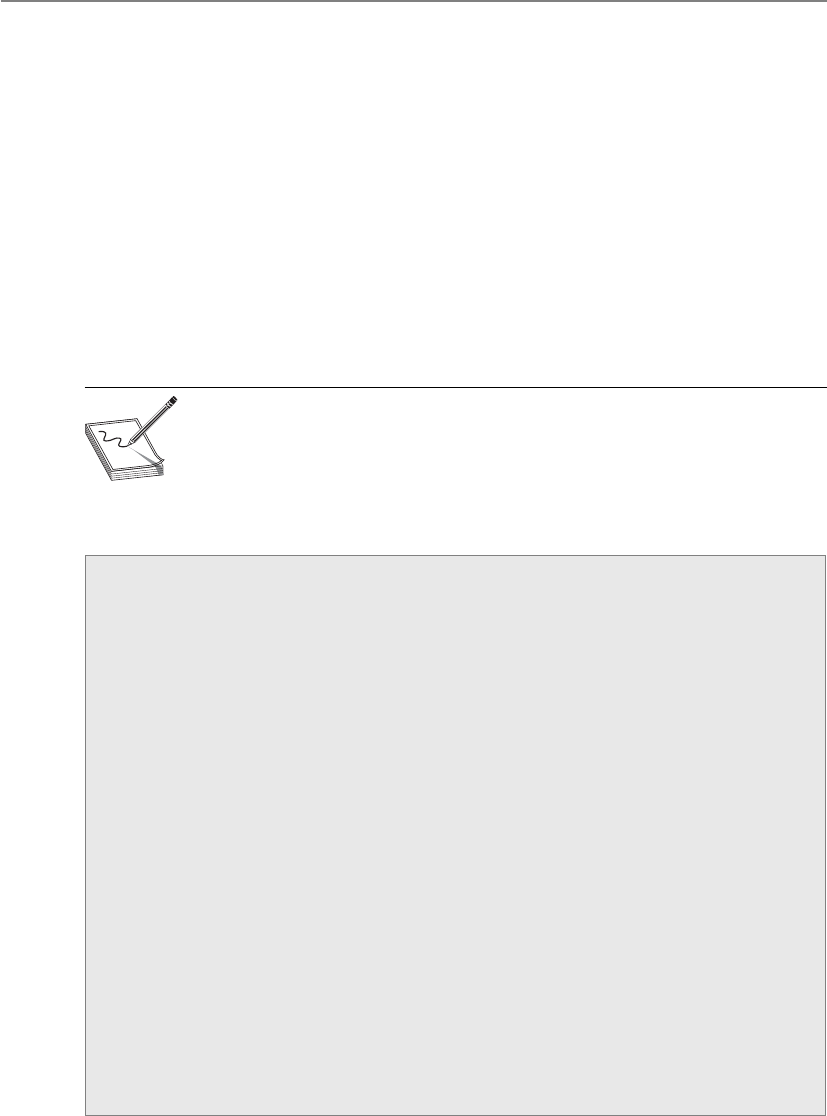

Relational Database Components . . . . . . . . . . . . . . . . . . . . . 1177

Integrity . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1180

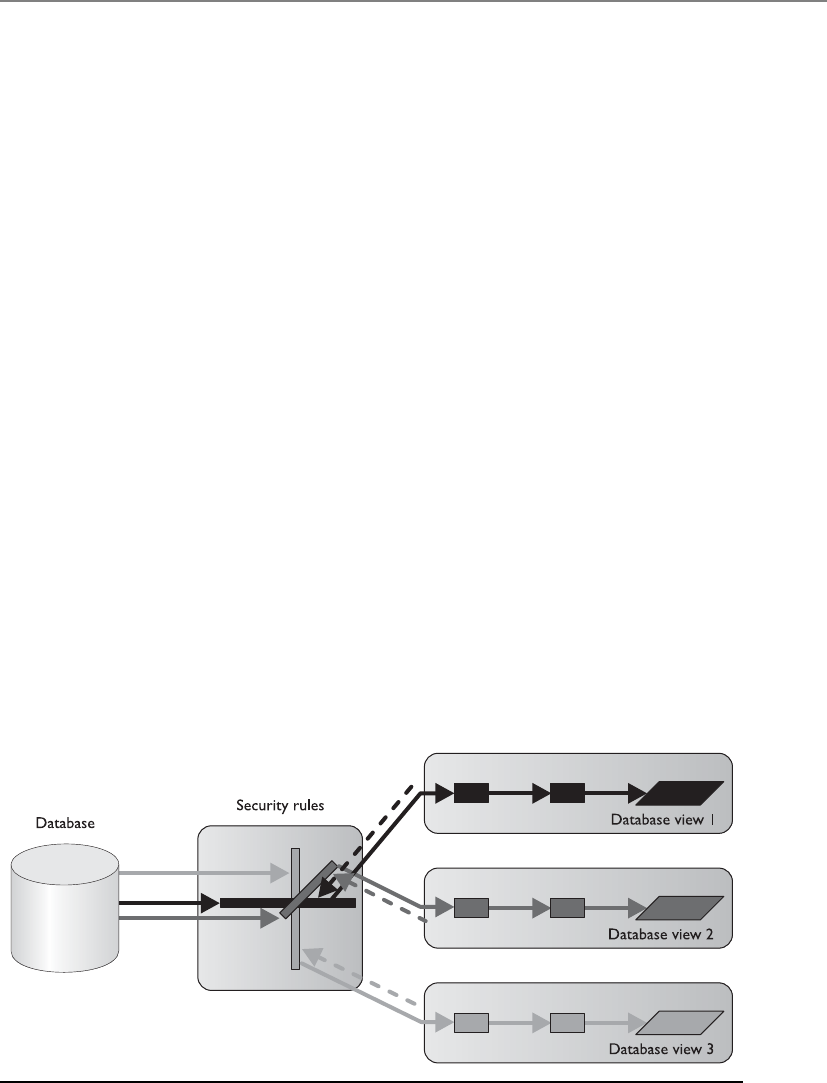

Database Security Issues . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1183

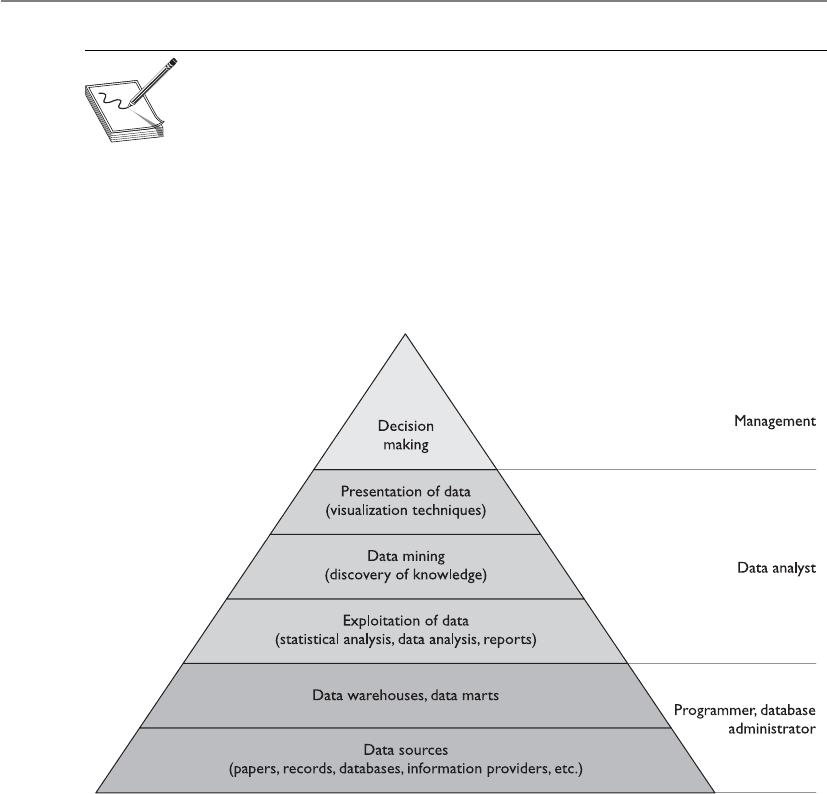

Data Warehousing and Data Mining . . . . . . . . . . . . . . . . . . . 1188

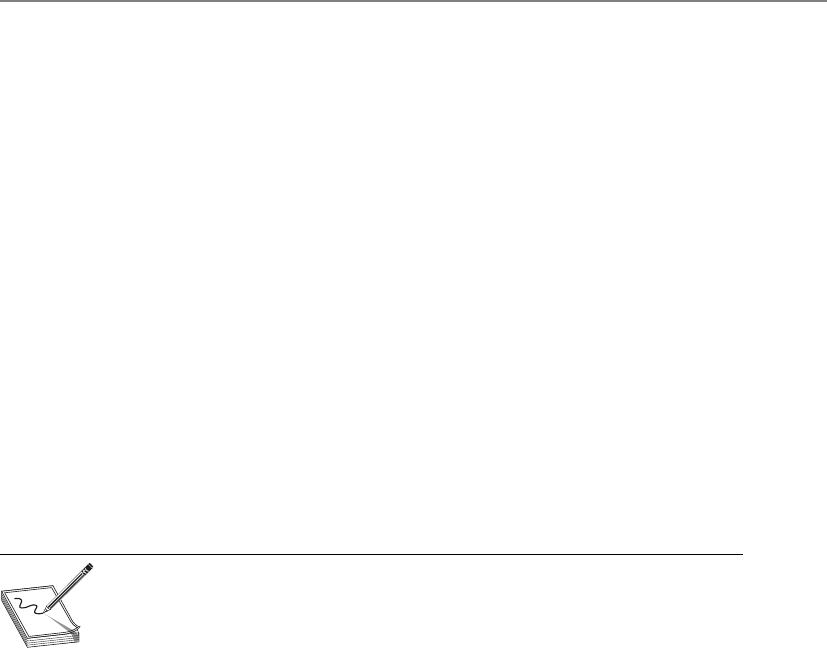

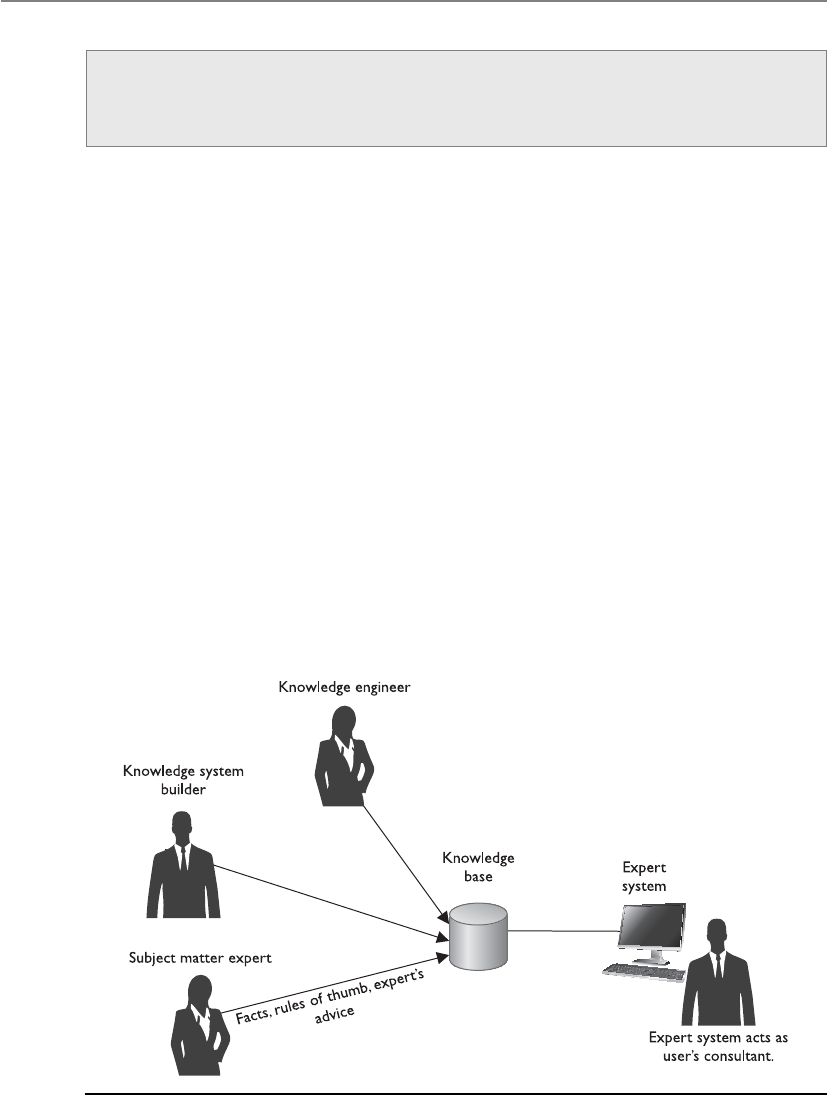

Expert Systems/Knowledge-Based Systems . . . . . . . . . . . . . . . . . . . 1192

Artificial Neural Networks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1195

Malicious Software (Malware) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1197

Viruses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1199

Worms . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1202

Rootkit . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1202

Spyware and Adware . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1204

Botnets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1204

Logic Bombs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1206

Trojan Horses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1206

Antivirus Software . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1207

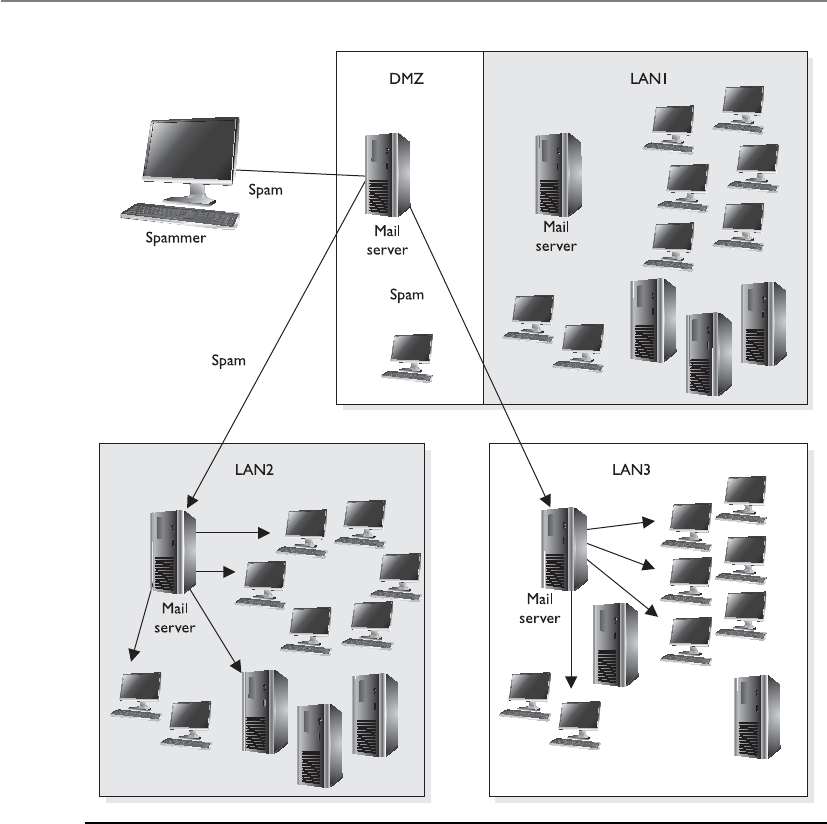

Spam Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1210

Antimalware Programs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1212

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1214

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1215

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1220

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1227

Chapter 11 Security Operations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1233

The Role of the Operations Department . . . . . . . . . . . . . . . . . . . . . 1234

Administrative Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1235

Security and Network Personnel . . . . . . . . . . . . . . . . . . . . . . . 1237

Accountability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1239

Clipping Levels . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1239

Assurance Levels . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1240

Operational Responsibilities . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1240

Unusual or Unexplained Occurrences . . . . . . . . . . . . . . . . . . 1241

Deviations from Standards . . . . . . . . . . . . . . . . . . . . . . . . . . . 1241

Unscheduled Initial Program Loads (aka Rebooting) . . . . . . 1242

Asset Identification and Management . . . . . . . . . . . . . . . . . . 1242

System Controls . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1243

Trusted Recovery . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1244

Input and Output Controls . . . . . . . . . . . . . . . . . . . . . . . . . . . 1246

System Hardening . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1248

Remote Access Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1250

Contents

xix

Configuration Management . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1251

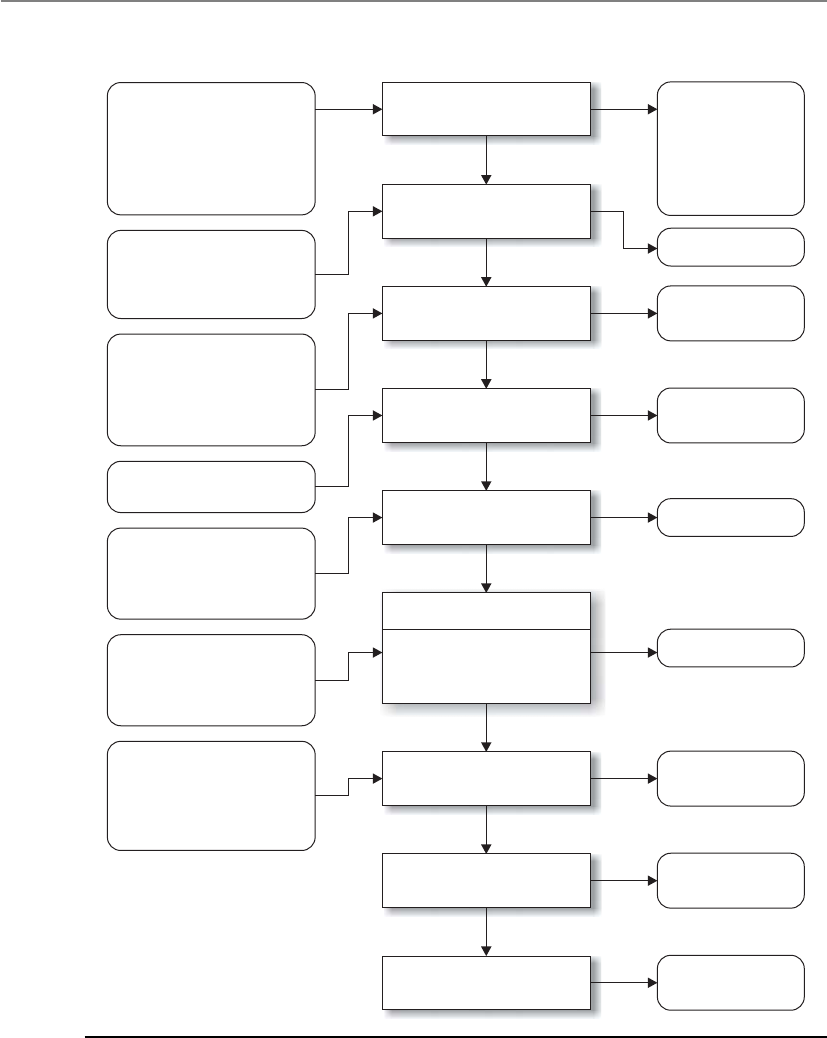

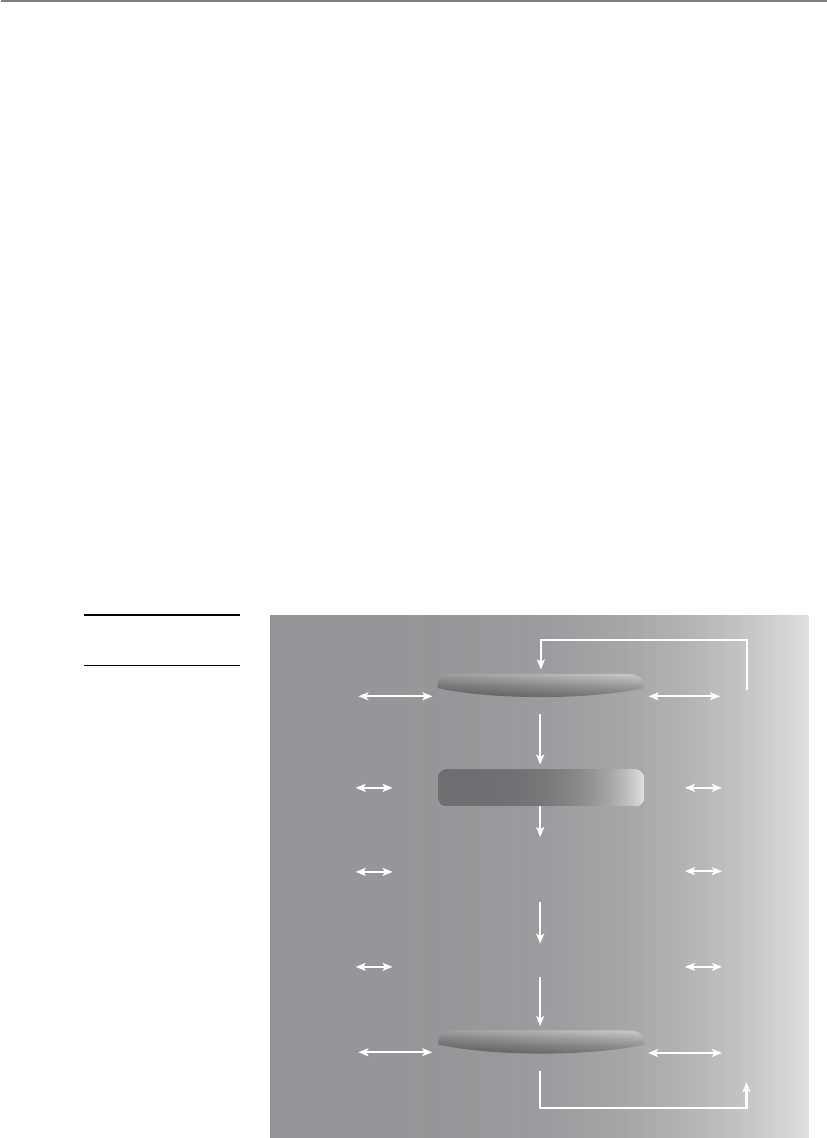

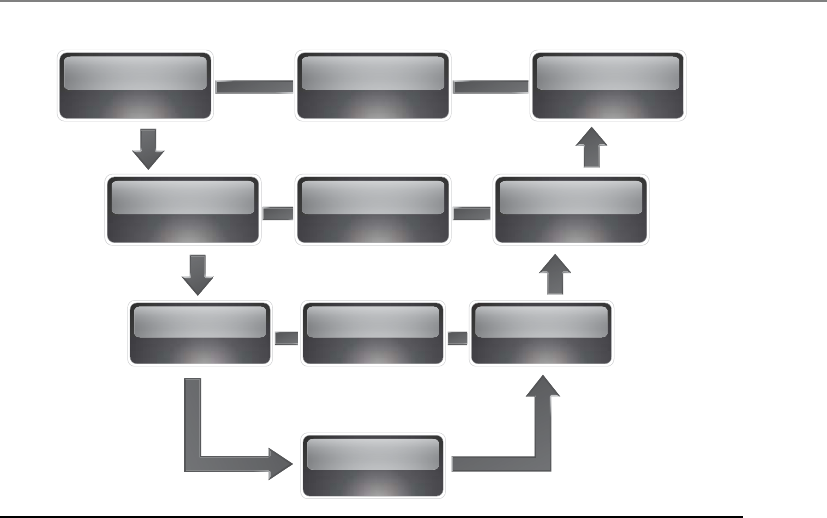

Change Control Process . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1252

Change Control Documentation . . . . . . . . . . . . . . . . . . . . . . 1253

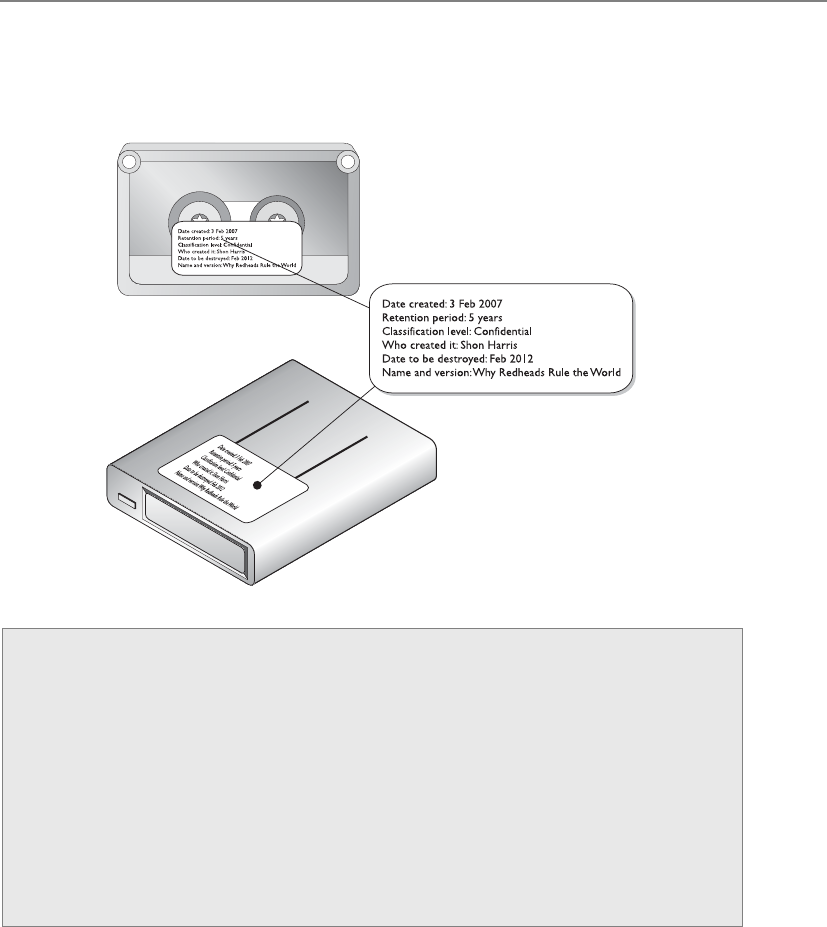

Media Controls . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1254

Data Leakage . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1262

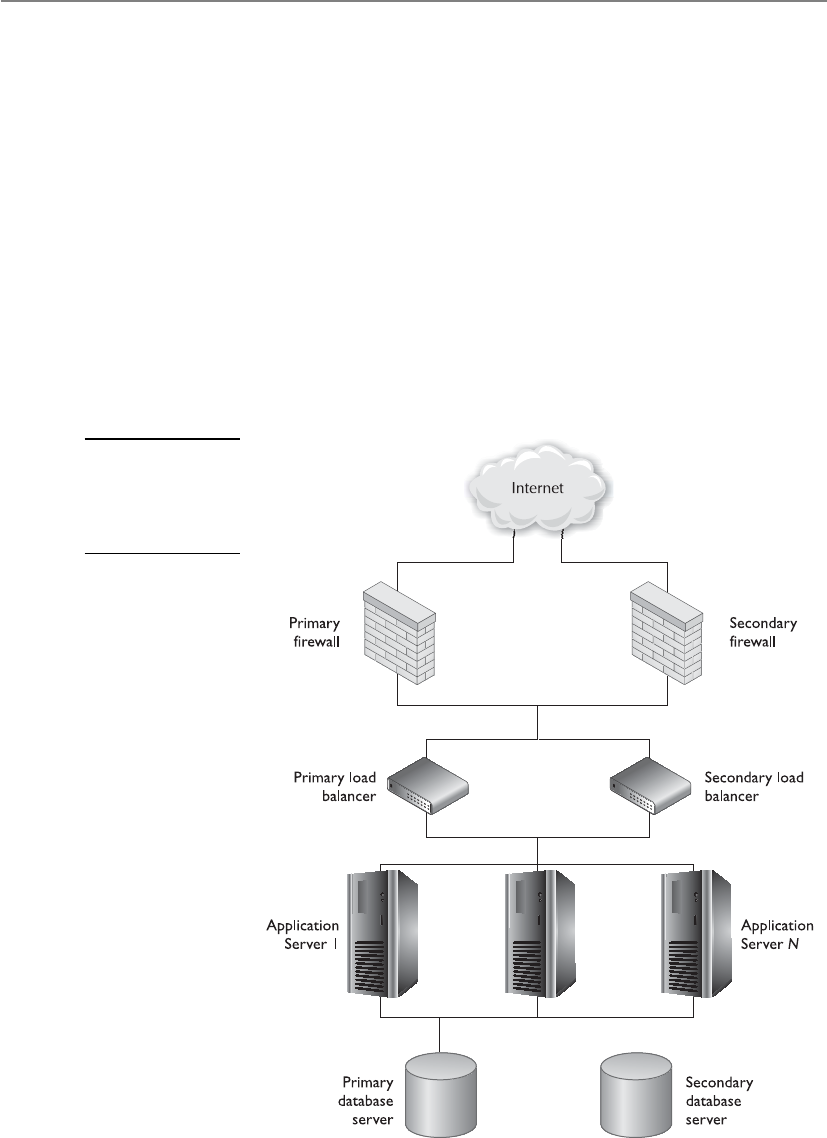

Network and Resource Availability . . . . . . . . . . . . . . . . . . . . . . . . . . 1263

Mean Time Between Failures . . . . . . . . . . . . . . . . . . . . . . . . . 1264

Mean Time to Repair . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1264

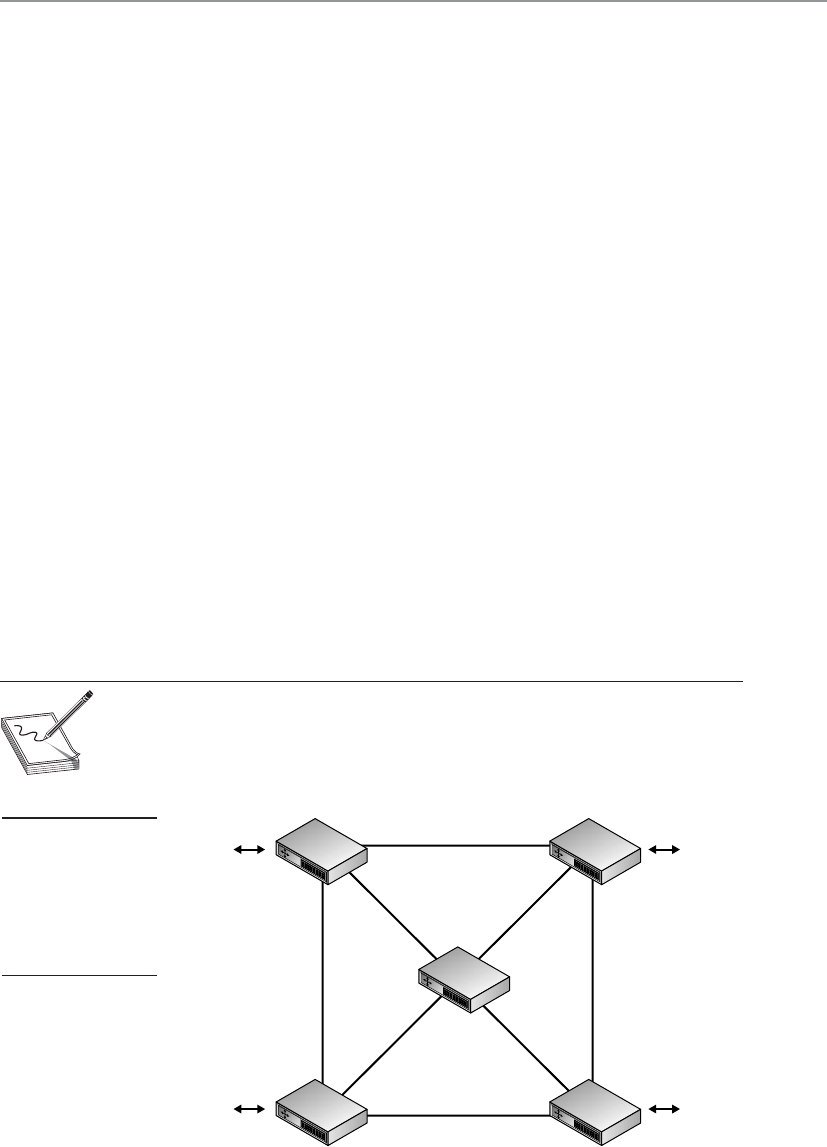

Single Points of Failure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1265

Backups . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1273

Contingency Planning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1276

Mainframes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1277

E-mail Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1279

How E-mail Works . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1281

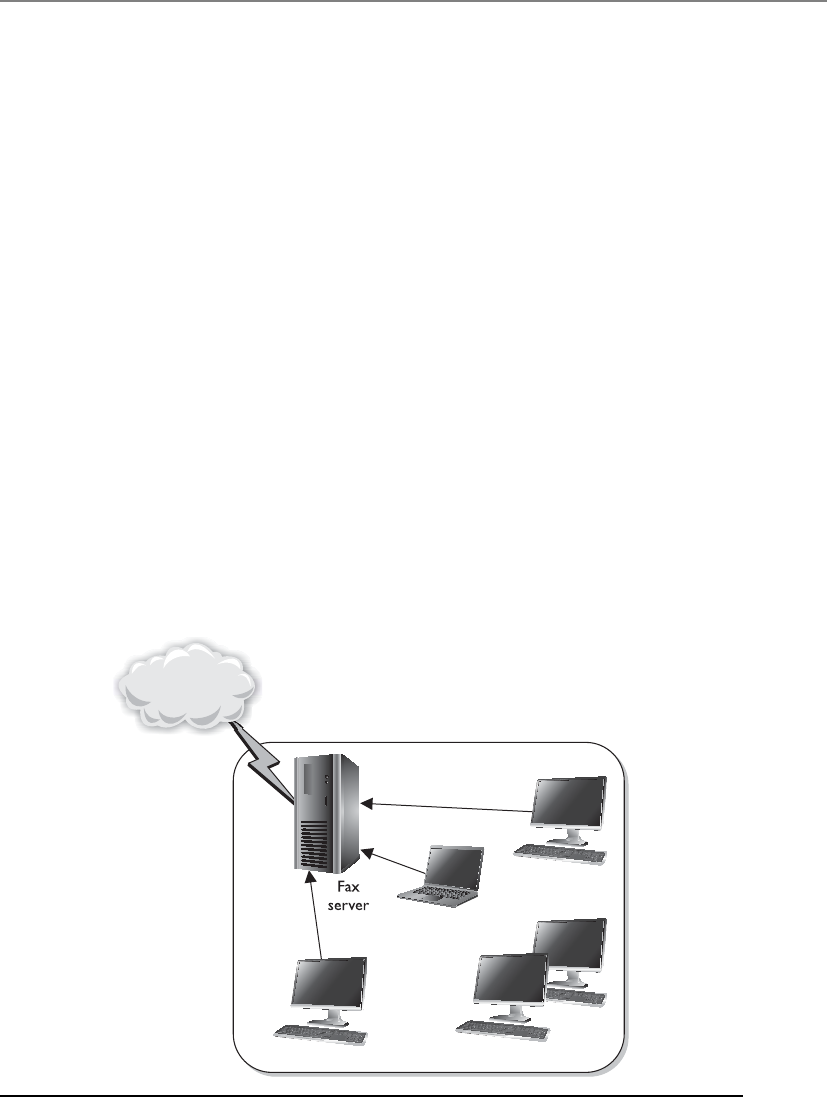

Facsimile Security . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1283

Hack and Attack Methods . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1285

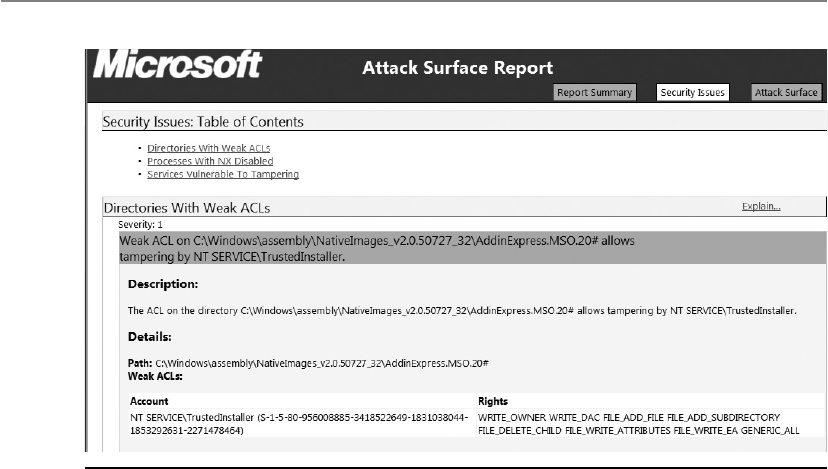

Vulnerability Testing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1295

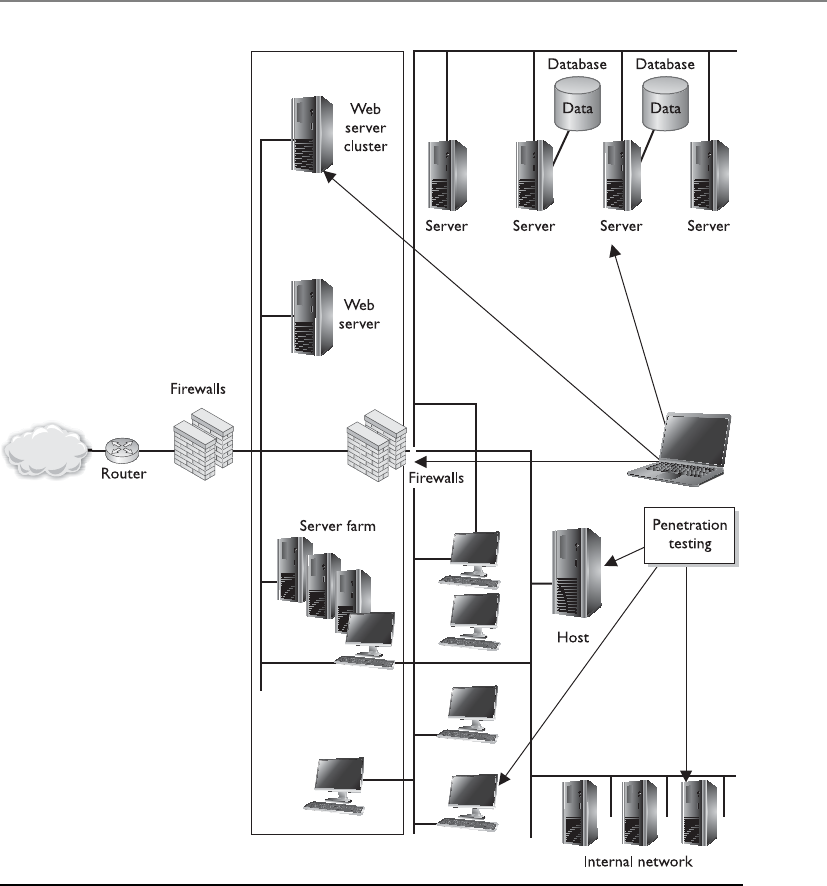

Penetration Testing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1298

Wardialing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1302

Other Vulnerability Types . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1303

Postmortem . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1305

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1307

Quick Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1307

Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1309

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1315

Appendix A Comprehensive Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1319

Answers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1357

Appendix B About the Download . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1379

Downloading the Total Tester . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1379

1379

About Total Tester CISSP Practice Exam Software . . . . . . . . . . . 1380

Installing and Running Total Tester . . . . . . . . . . . . . . . . . . . . 1379

Technical Support . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1382

Index . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1385

Total Tester System Requirements . . . . . . . . . . . . . . . . . . . . . .

Media Center Download . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1380

Cryptography Video Sample . . . . . . . . . . . . . . . . . . . . . . . . . . . 1380

Glossary. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . G-1

FOREWORDS

This year marks my 39th year in the security business, with 26 of those concentrating

on information security. What changes we have seen over that period: from computers

the size of rooms that had to be cooled with water to phones that from a computing

aspect are orders of magnitude more powerful than the computers NASA used to land

man on the moon.

While we have watched in awe, the technology has evolved and our quality of life

has improved. As examples, we can make payments from our phones, our cars are

operated by computers that optimize performance and fuel economy, and we can

check in and get our boarding passes from our hotel room.

Unfortunately there are those who see these advances as opportunities for them

personally or their organization to gain politically or financially by finding vulnera-

bilities within these technologies and exploit them to their advantage.

To combat these adversaries the information security specialty evolved. One of the

first organizations to formerly recognize this specialty was (ISC)2, which was founded

in 1988. In 1994 (ISC)2 created the Certified Information Systems Security Profes-

sional (CISSP) credential and conducted the first examination. This credential gave

assurance to managers and employers (and potential employers) that the holder pos-

sessed a baseline understanding of the ten domains that comprise the Common Body

of Knowledge (CBK).

The CISSP All-in-One Exam Guide by Shon Harris is one of many publications de-

signed to prepare a potential CISSP exam taker for the exam. I admittedly have prob-

ably not seen every one of these guides, but I would argue that I have seen most. The

difference between Shon’s book and the others is that her book doesn’t try to teach

the exam, and as such, her book teaches the material one needs to pass the exam. This

means that when the exam is over and the credential has been awarded, Shon’s book

will still be on your bookshelf (or tablet) as a valuable reference guide.

I have known Shon for close to 15 years and continue to be struck by her dedica-

tion, ethics, and honor. She is the most dedicated advocate of our profession I have

met. She works constantly to improve her materials so learners at all levels can better

understand the topics. She has developed a learning model that helps make sure ev-

eryone in an organization from the bottom to the C-suite know what they need to

know to make informed choices resulting in due diligence with the data entrusted to

them.

It is a privilege to have the opportunity to help introduce this work to the perspec-

tive CISSP. I’m a huge Shon fan and I think that after learning from this book, you will

be too. Enjoy your experience and know that your work in achieving this credential

will be worth the effort.

Tom Madden, MPA, CISSP, CISM

Chief Information Security Officer for the Centers for Disease Control

xx

Forewords

xxi

Today’s cybersecurity landscape is an ever-changing environment where the greatest

vector of attack is our normal activities, e-mail, surfing, etc. The adversary can and will

continue to use mobile apps, e-mail (phishing, spam), the Web (redirects), and other

tools of our day-to-day activities to get to intellectual property, personal information, and

other sensitive data. Hactivists, criminals, and terrorists use this information to gain un-

authorized access to systems and sensitive data. Hactivists commonly use the informa-

tion that is gathered during attacks to support and convey messages that support their

agenda. According to Symantec, there were 163 new vulnerabilities associated with mo-

bile computing, 286 million unique malware variants, a 93 percent increase in web at-

tacks, and 260,000 identities exposed in 2010. McAffee reported that during the first two

quarters of 2011 there were 16,200 new websites with malicious reputations, and an aver-

age of 2,600 new phishing sites appeared each day.

We have also seen the proliferation of new technologies into our day-to-day activi-

ties. New smart phones and tablets have become as common as the home computer.

New tools mean new vulnerabilities that cybersecurity experts must deal with. Informa-

tion technology implementers tend to design and implement quickly. One of the most

underdiscussed aspects of new technology is the risks it may pose to the overall system

or organization. Tech departments, in conjunction with the CSO/CISO, need to find

the best way to utilize the new technology, but with security (physical and cyber) a very

strong part of the deployment strategy.

Training and awareness are important to help end users, cybersecurity practitioners,

and CxOs understand what effect their actions have within the organization. Even what

technology users do at home when they telework and connect to the business network

or carry home data could have consequences. Security policy and its enforcement will

also aid in establishing a stronger security baseline. Organizations have realized this

and many of those organizations have begun writing and implementing acceptable-use

policies for their networks. These policies, if properly enforced, will help minimize

adverse events on their networks.

There will never be one solution that can solve the cybersecurity challenges. De-

fense-in-depth, best business practices, training and awareness, and timely information

sharing are going to be the techniques used to minimize the impact of cyber adversar-

ies. Cyber experts need to keep their leadership informed of the latest threats and meth-

ods to minimize effects from those threats. Many of the day-to-day actions and decisions

a CFO, CEO, COO, etc., make will have an impact on the mission; security decisions

must be part of that process as well.

The CISSP All-in-One Exam Guide has been a staple of my reference library well after

obtaining my certification. Shon Harris has written and continues to improve this com-

prehensive study guide. Individuals at all levels of an organization, especially those

with a role in cybersecurity, should strive to get the CISSP. This study guide is a key part

to successfully gaining this certification. Prepping for and obtaining the CISSP can be

an important tool in the cyber expert’s toolkit. The ten domains associated with the

CISSP assist individuals at all levels to look at information security from many angles.

One tends to focus on one aspect of the information security field; the CISSP opens our

CISSP All-in-One Exam Guide

xxii

eyes to multiple aspects of issues. It provides the security professional an overall per-

spective of cybersecurity. From policy-related domains to technical domains, the CISSP

and this study guide tie many aspects of cybersecurity together. Even if that is not part

of your immediate training plan, this book will help any person become a better secu-

rity practitioner and improve the security posture of their organization.

Randy Vickers

Alexa Strategies, Inc.

Cybersecurity Strategist and Consultant

Former Director of the US CERT and former Chief of the DoD CERT (JTF-GNO)

ACKNOWLEDGMENTS

I would like to thank all the people who work in the information security industry who

are driven by their passion, dedication, and a true sense of doing right. The best secu-

rity people are the ones who are driven toward an ethical outcome.

I would like to thank so many people in the industry who have taken the time to

reach out to me and let me know how my work directly affects their lives. I appreciate

the time and effort people put in just to send me these kind words.

For my sixth edition, I would also like to thank the following individuals:

• David Miller, whose work ethic, loyalty, and friendship have inspired me. I am

truly grateful to have David as part of my life. I never would have known the

secret world of tequila without him.

• Clement Dupuis, who, with his deep passion for sharing and helping others,

has proven a wonderful and irreplaceable friend.

• My company team members: Susan Young and Teresa Griffin. I never express

my deep appreciation for each one of you enough.

• Greg Andelora, the graphic artist who created several of the graphics for this

book and my other projects. We tragically lost him at a young age and miss

him.

• Tom Madden and Randy Vickers, for their wonderful forewords to this book.

• My editor, Tim Green, and acquisitions coordinator, Stephanie Evans, who

sometimes have to practice the patience of Job when dealing with me on these

projects.

• The military personnel who have fought so hard over the last ten years in two

wars and have given such sacrifice.

• My best friend and mother, Kathy Conlon, who is always there for me through

thick and thin.

Most especially, I would like to thank my husband, David Harris, for his continual

support and love. Without his steadfast confidence in me, I would not have been able

to accomplish half the things I have taken on in my life.

xxiii

To obtain material from the disk that

accompanies the printed version of

please click here.

this e-Book, please follow the instructions this e-Book, please follow the instructions

To obtain material from the disk that

in Appendix B, About the Download.

CHAPTER 1

Becoming a CISSP

This chapter presents the following:

• Description of the CISSP certification

• Reasons to become a CISSP

• What the CISSP exam entails

• The Common Body of Knowledge and what it contains

• The history of (ISC)2 and the CISSP exam

• An assessment test to gauge your current knowledge of security

This book is intended not only to provide you with the necessary information to help

you gain a CISSP certification, but also to welcome you into the exciting and challeng-

ing world of security.

The Certified Information Systems Security Professional (CISSP) exam covers ten