Cloud Foundry: The Definitive Guide Foundry Duncan C. E. Winn

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 324 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Copyright

- Table of Contents

- Foreword

- Preface

- Chapter 1. The Cloud-Native Platform

- Chapter 2. Concepts

- Undifferentiated Heavy Lifting

- The Cloud Operating System

- Do More

- The Application as the Unit of Deployment

- Using cf push Command to Deploy

- Staging

- Self-Service Application Life Cycle

- The Twelve-Factor Contract

- Release Engineering through BOSH

- Built-In Resilience and Fault Tolerance

- Aggregated Streaming of Logs and Metrics

- Security

- UAA Management

- Organizations and Spaces

- Domains Hosts and Routes

- Rolling Upgrades and Blue/Green Deployments

- Summary

- Chapter 3. Components

- Chapter 4. Preparing Your Cloud Foundry Environment

- Chapter 5. Installing and Configuring Cloud Foundry

- Chapter 6. Diego

- Chapter 7. Routing Considerations

- Chapter 8. Containers, Containers, Containers

- Chapter 9. Buildpacks and Docker

- Chapter 10. BOSH Concepts

- Chapter 11. BOSH Releases

- Chapter 12. BOSH Deployments

- Chapter 13. BOSH Components and Commands

- Chapter 14. Debugging Cloud Foundry

- Cloud Foundry Acceptance Tests

- Logging

- Typical Failure Scenarios

- Scenario One: The App Is Not Reachable

- Scenario Two: Network Address Translation Instance Deleted (Network Failure)

- Scenario Three: Security Group Misconfiguration That Blocks Ingress Traffic

- Scenario Four: Invoking High Memory Usage That Kills a Container

- Scenario Five: Route Collision

- Scenario 6: Release Job Process Failures

- Scenario 7: Instance Group Failure

- Summary

- Chapter 15. User Account and Authentication Management

- Chapter 16. Designing for Resilience, Planning for Disaster

- Chapter 17. Cloud Foundry Roadmap

- Index

- About the Author

- Colophon

Duncan C. E. Winn

DEVELOP, DEPLOY, AND SCALE

Cloud Foundr y

The Definitive Guide

Duncan C. E. Winn

Cloud Foundry:

The Definitive Guide

Develop, Deploy, and Scale

Boston Farnham Sebastopol Tokyo

Beijing Boston Farnham Sebastopol Tokyo

Beijing

978-1-491-93243-8

[LSI]

Cloud Foundry: The Definitive Guide

by Duncan C. E. Winn

Copyright © 2017 Duncan Winn. All rights reserved.

Printed in the United States of America.

Published by O’Reilly Media, Inc. , 1005 Gravenstein Highway North, Sebastopol, CA 95472.

O’Reilly books may be purchased for educational, business, or sales promotional use. Online editions are

also available for most titles (http://oreilly.com/safari). For more information, contact our corporate/insti‐

tutional sales department: 800-998-9938 or corporate@oreilly.com.

Editors: Brian Anderson and Virginia Wilson Indexer: Judy McConville

Production Editor: Melanie Yarbrough Interior Designer: David Futato

Copyeditor: Octal Publishing, Inc. Cover Designer: Karen Montgomery

Proofreader: Christina Edwards Illustrator: Rebecca Demarest

May 2017: First Edition

Revision History for the First Edition

2017-05-22: First Release

The O’Reilly logo is a registered trademark of O’Reilly Media, Inc. Cloud Foundry: The Definitive Guide,

the cover image, and related trade dress are trademarks of O’Reilly Media, Inc.

While the publisher and the author have used good faith efforts to ensure that the information and

instructions contained in this work are accurate, the publisher and the author disclaim all responsibility

for errors or omissions, including without limitation responsibility for damages resulting from the use of

or reliance on this work. Use of the information and instructions contained in this work is at your own

risk. If any code samples or other technology this work contains or describes is subject to open source

licenses or the intellectual property rights of others, it is your responsibility to ensure that your use

thereof complies with such licenses and/or rights.

To my daughters, Maya and Eva.

Dream BIG. The world awaits you...

Table of Contents

Foreword. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . xv

Preface. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . xvii

1. The Cloud-Native Platform. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1

Why You Need a Cloud-Native Platform 1

Cloud-Native Platform Concepts 2

The Structured Platform 4

The Opinionated Platform 4

The Open Platform 5

Summary 5

2. Concepts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

Undifferentiated Heavy Lifting 7

The Cloud Operating System 8

Do More 9

The Application as the Unit of Deployment 10

Using cf push Command to Deploy 11

Staging 11

Self-Service Application Life Cycle 12

The Twelve-Factor Contract 13

Release Engineering through BOSH 14

Built-In Resilience and Fault Tolerance 15

Self-Healing Processes 16

Self-Healing VMs 16

Self-Healing Application Instance Count 16

Resiliency Through Availability Zones 16

Aggregated Streaming of Logs and Metrics 17

v

Security 19

Distributed System Security 19

Environmental Risk Factors for Advanced Persistent Threats 20

Challenge of Minimal Change 20

The Three Rs of Enterprise Security 21

UAA Management 23

Organizations and Spaces 23

Orgs 24

Spaces 24

Resource Allocation 25

Domains Hosts and Routes 25

Route 25

Domains 26

Context Path–Based Routing 26

Rolling Upgrades and Blue/Green Deployments 27

Summary 27

3. Components. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

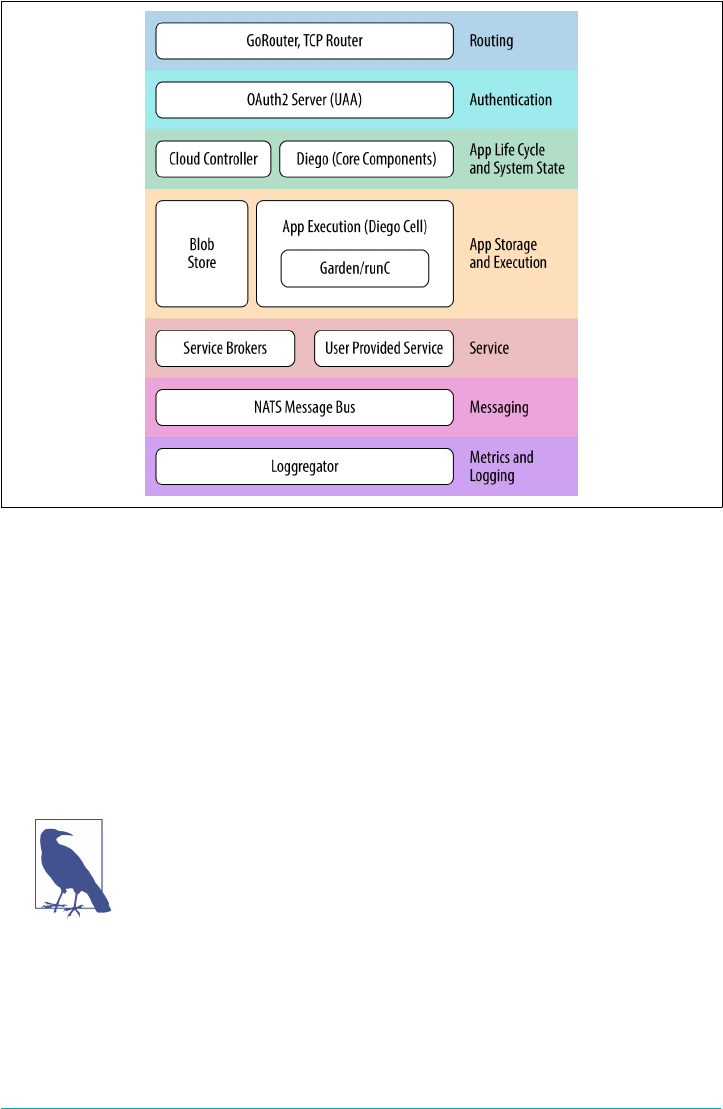

Component Overview 30

Routing via the Load Balancer and GoRouter 31

User Management and the UAA 32

The Cloud Controller 33

System State 34

The Application Life-Cycle Policy 34

Application Execution 35

Diego 35

Garden and runC 35

Metrics and Logging 36

Metron Agent 36

Loggregator 36

Messaging 37

Additional Components 37

Stacks 37

A Marketplace of On-Demand Services 37

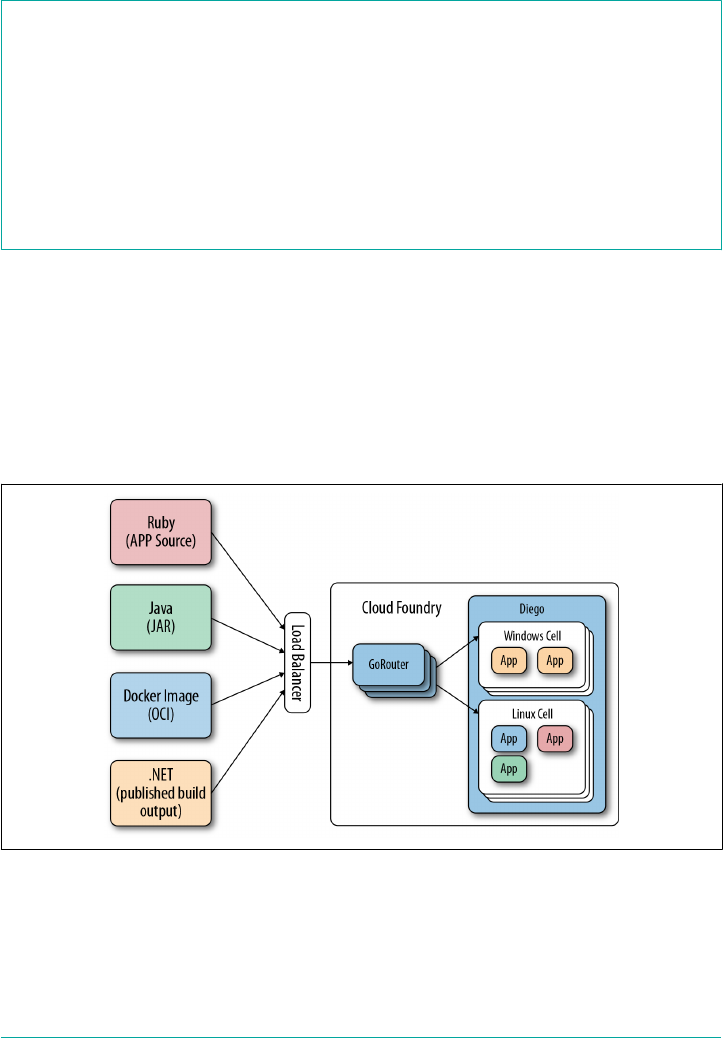

Buildpacks and Docker Images 39

Infrastructure and the Cloud Provider Interface 40

The Cloud Foundry GitHub Repository 40

Summary 41

4. Preparing Your Cloud Foundry Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

Installation Steps 43

Non-technical Considerations 44

vi | Table of Contents

Team Structure: Platform Operations for the Enterprise 44

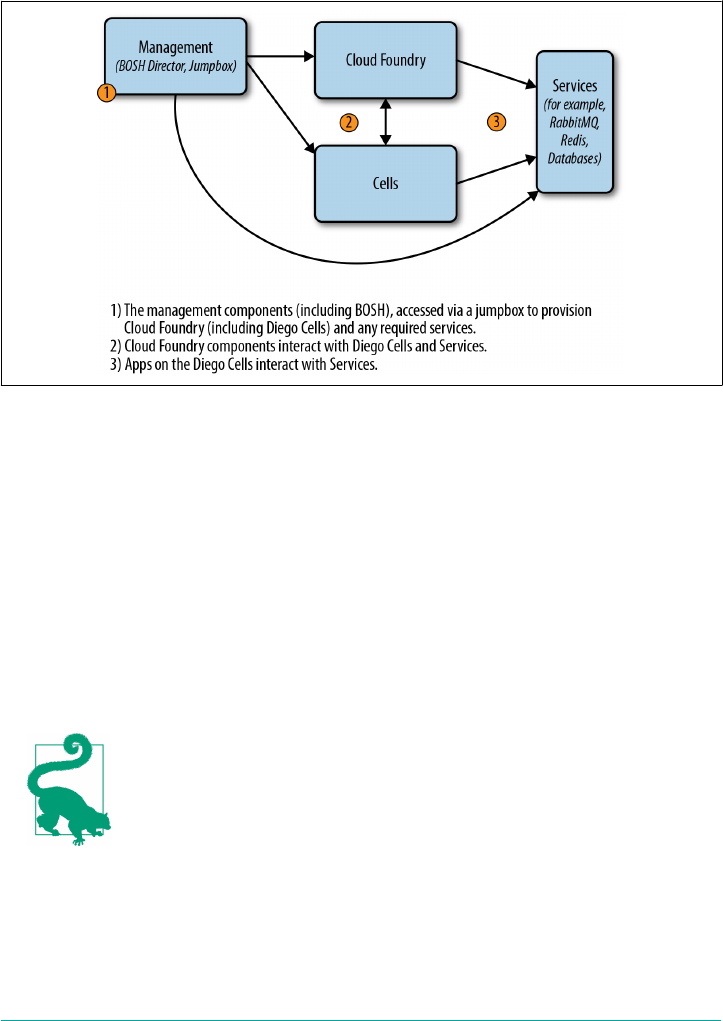

Deployment Topology 46

Cloud Foundry Dependencies and Integrations 47

IaaS and Infrastructure Design 48

Designing for Resilience 50

Sizing and Scoping the Infrastructure 50

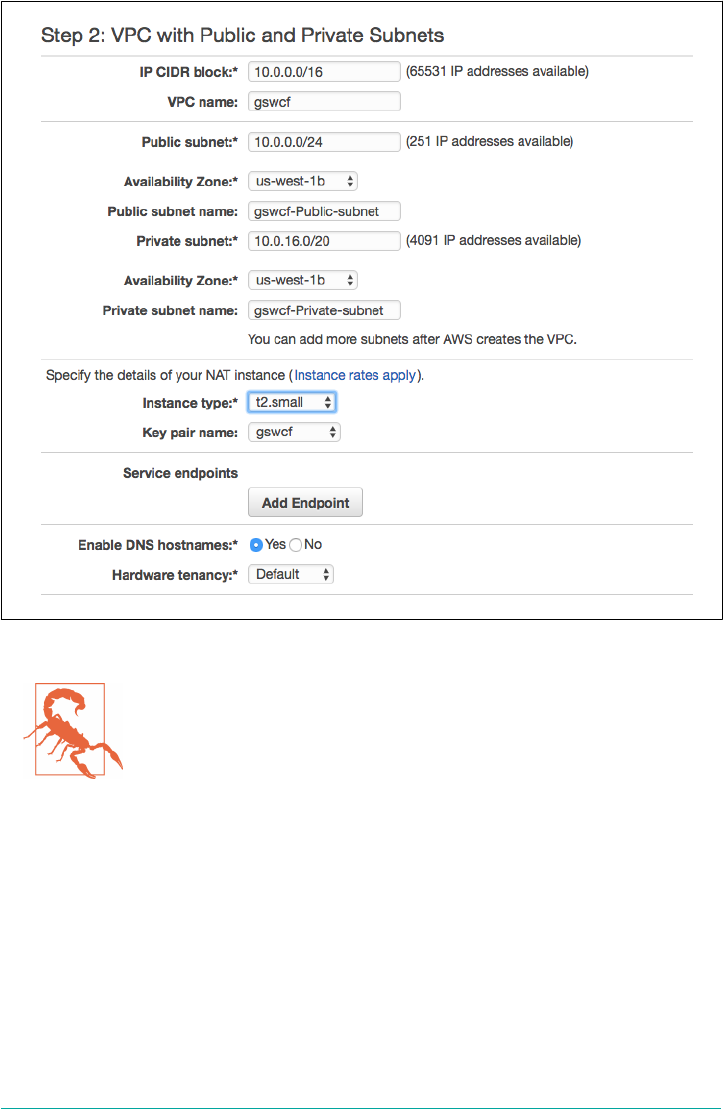

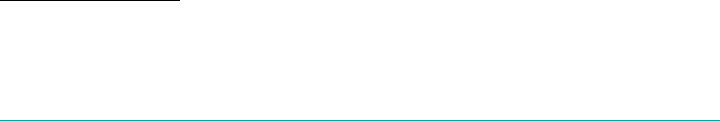

Setting Up an AWS VPC 55

Jumpbox 57

Networking Design and Routing 58

Using Static IPs 59

Subnets 60

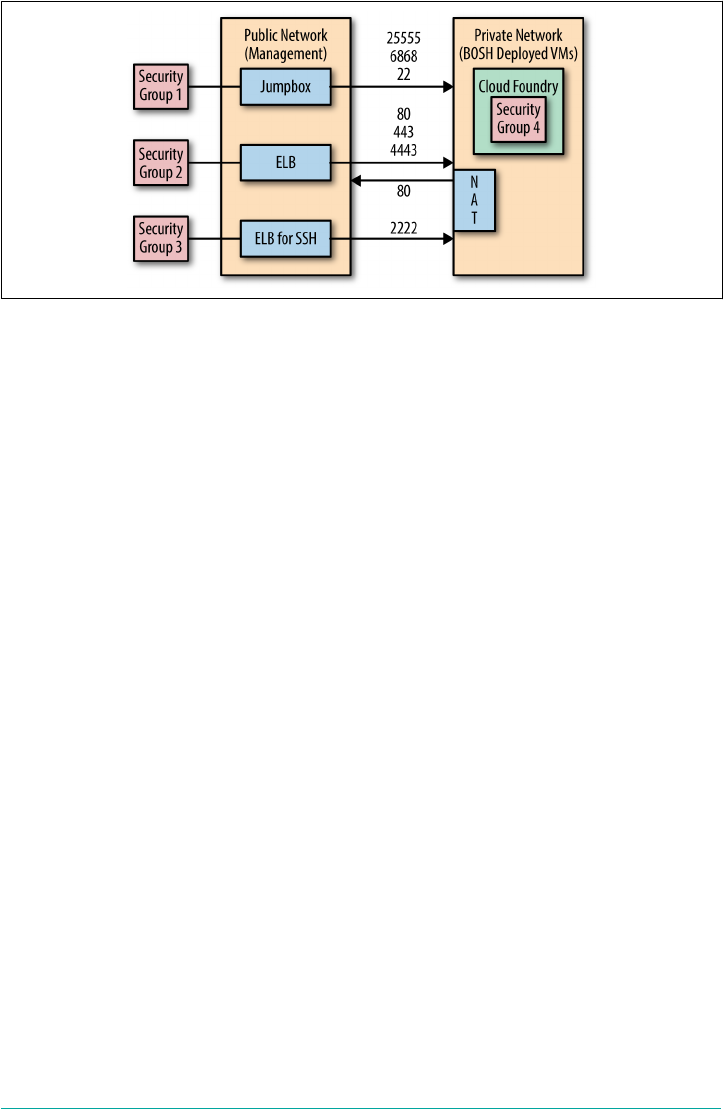

Security Groups 61

Setting Up the Load Balancer 62

Setting Up Domains and Certificates 62

Summary 63

5. Installing and Configuring Cloud Foundry. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

Installation Steps 66

Installing Cloud Foundry 67

Changing Stacks 73

Growing the Platform 73

Validating Platform Integrity in Production 73

Start with a Sandbox 73

Production Verification Testing 74

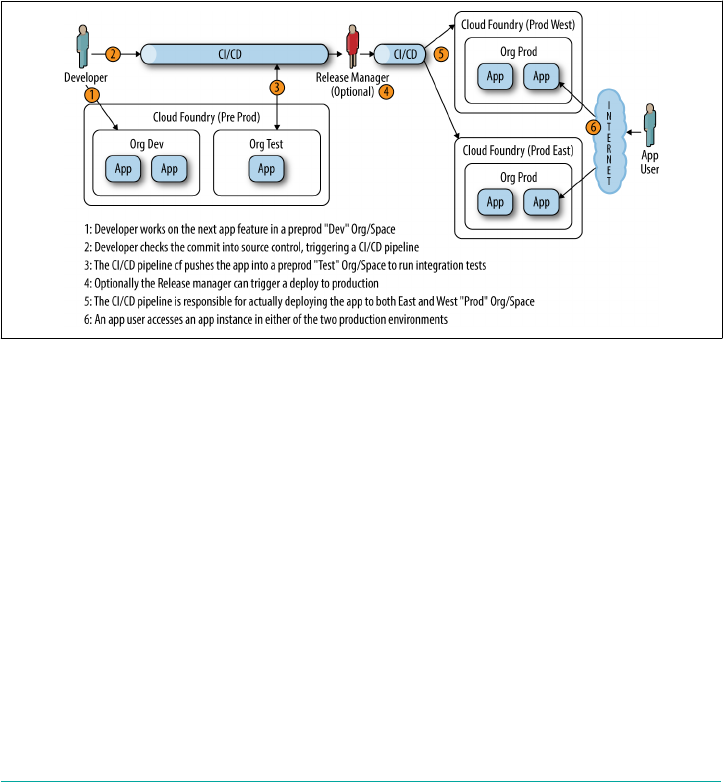

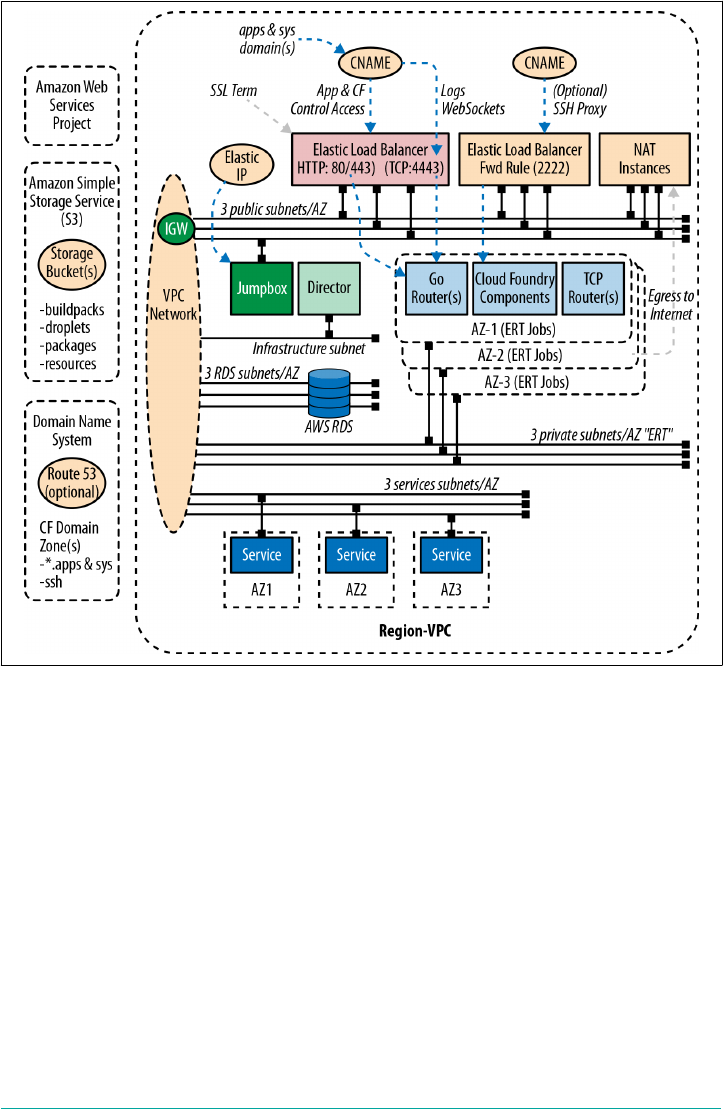

Logical Environment Structure 75

Pushing Your First App 77

Summary 77

6. Diego. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 79

Why Diego? 79

A Brief Overview of How Diego Works 82

Essential Diego Concepts 83

Action Abstraction 84

Composable Actions 85

Layered Architecture 87

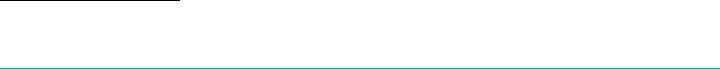

Interacting with Diego 88

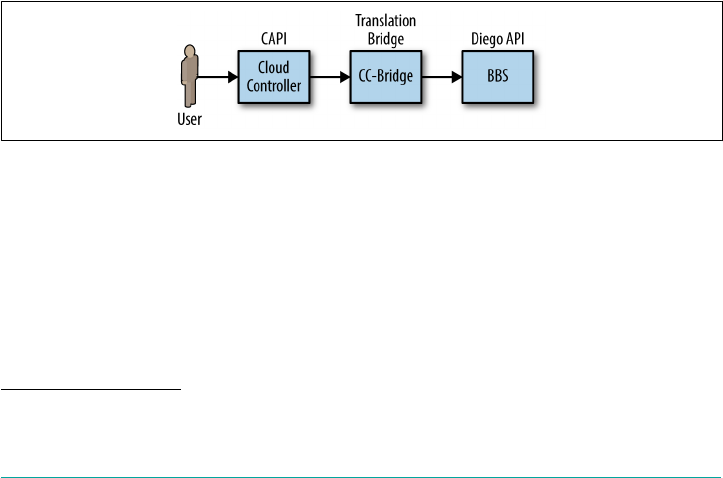

CAPI 89

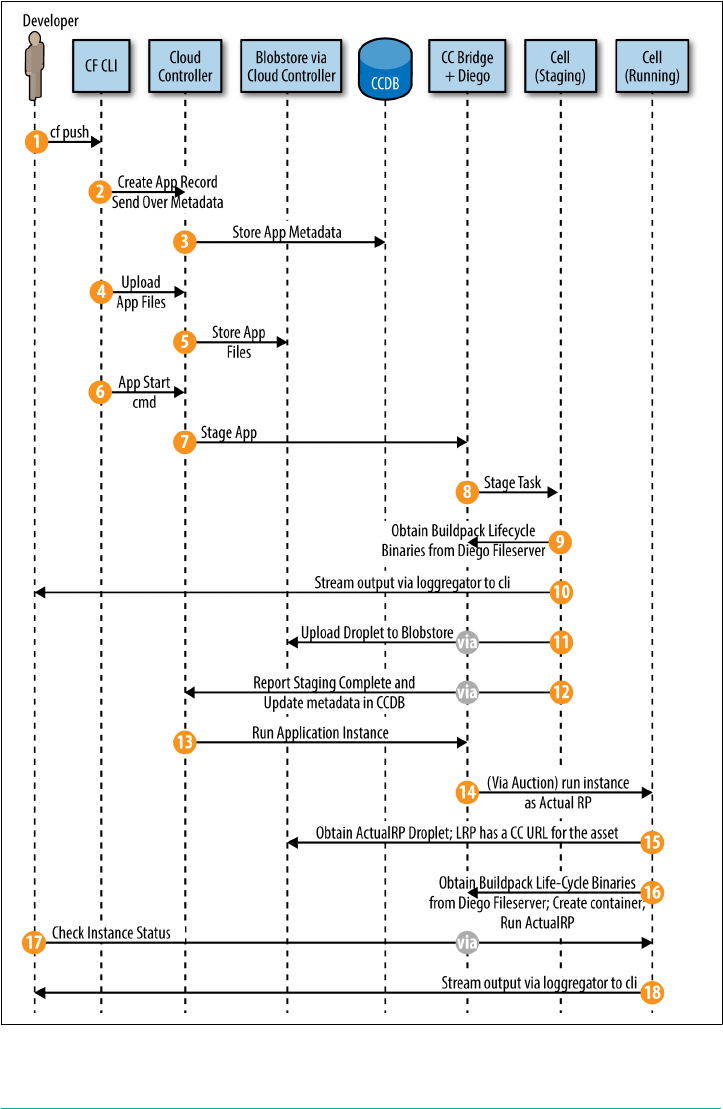

Staging Workflow 90

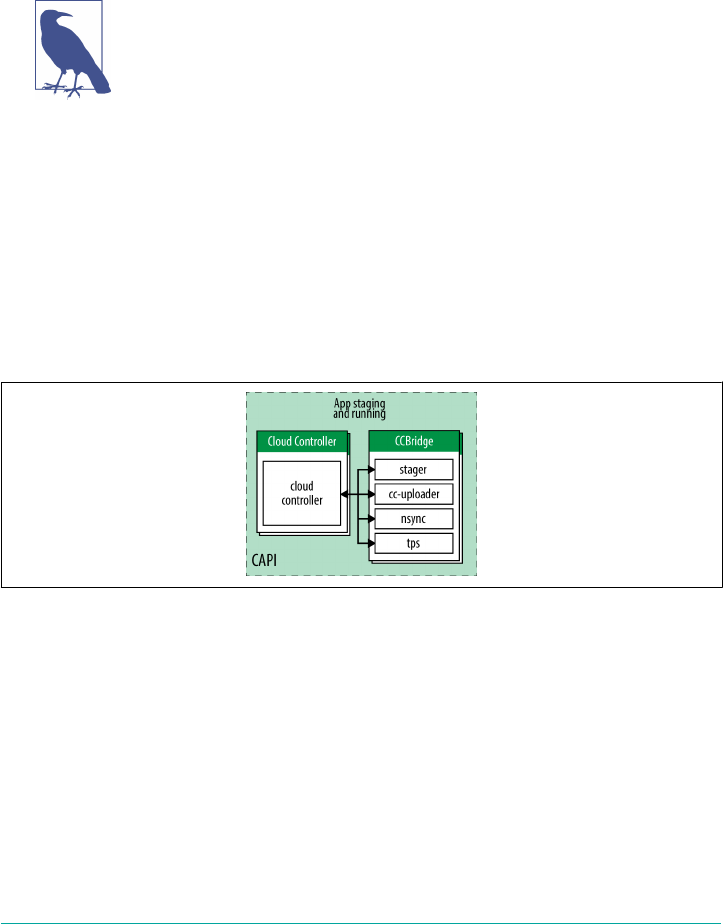

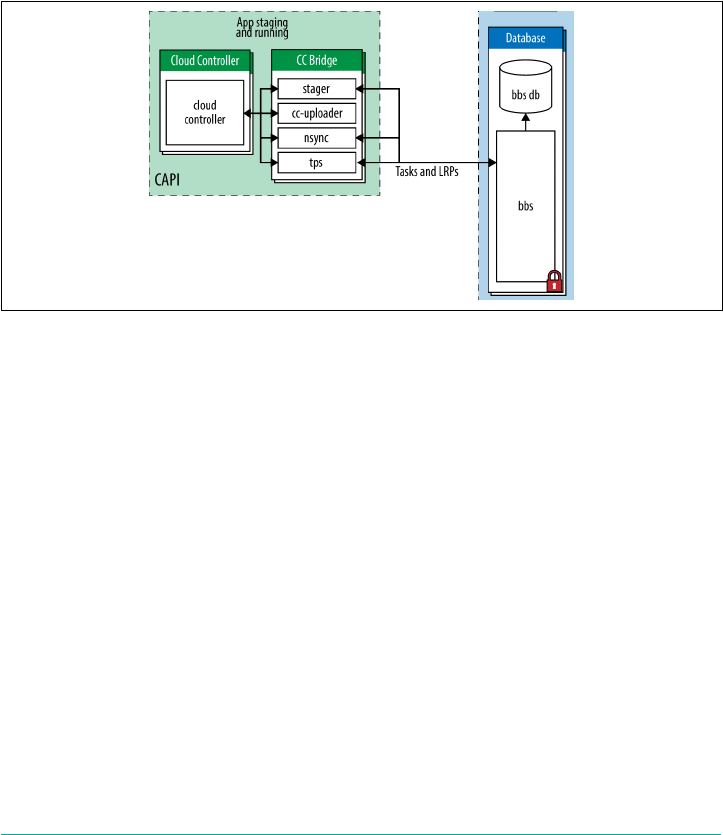

The CC-Bridge 93

Logging and Traffic Routing 97

Diego Components 97

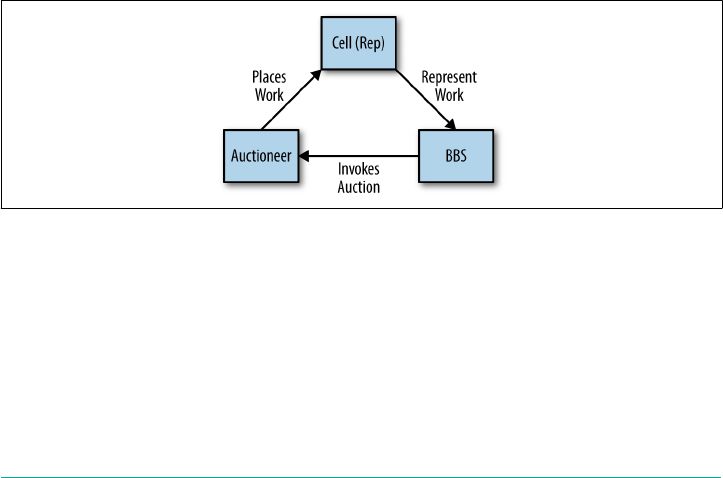

The BBS 98

Table of Contents | vii

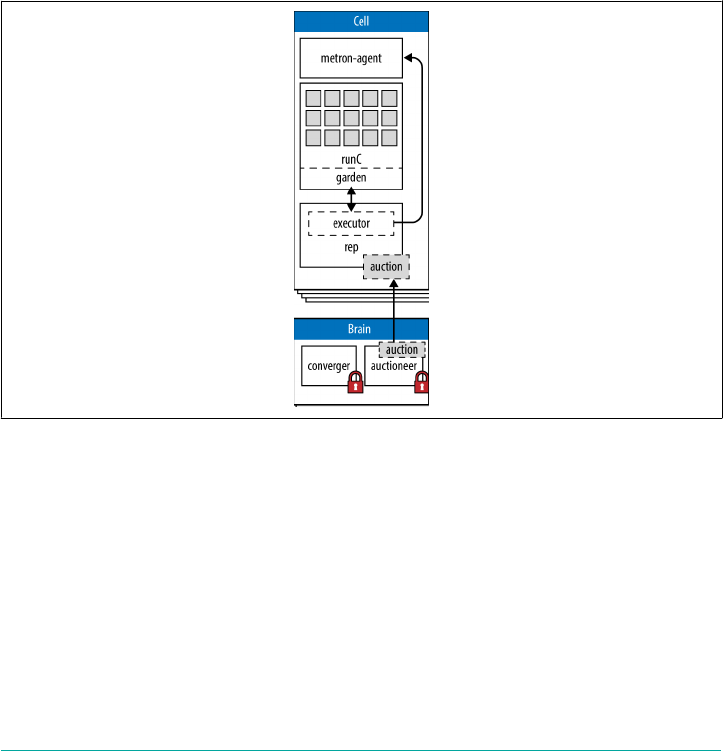

Diego Cell Components 101

The Diego Brain 104

The Access VM 106

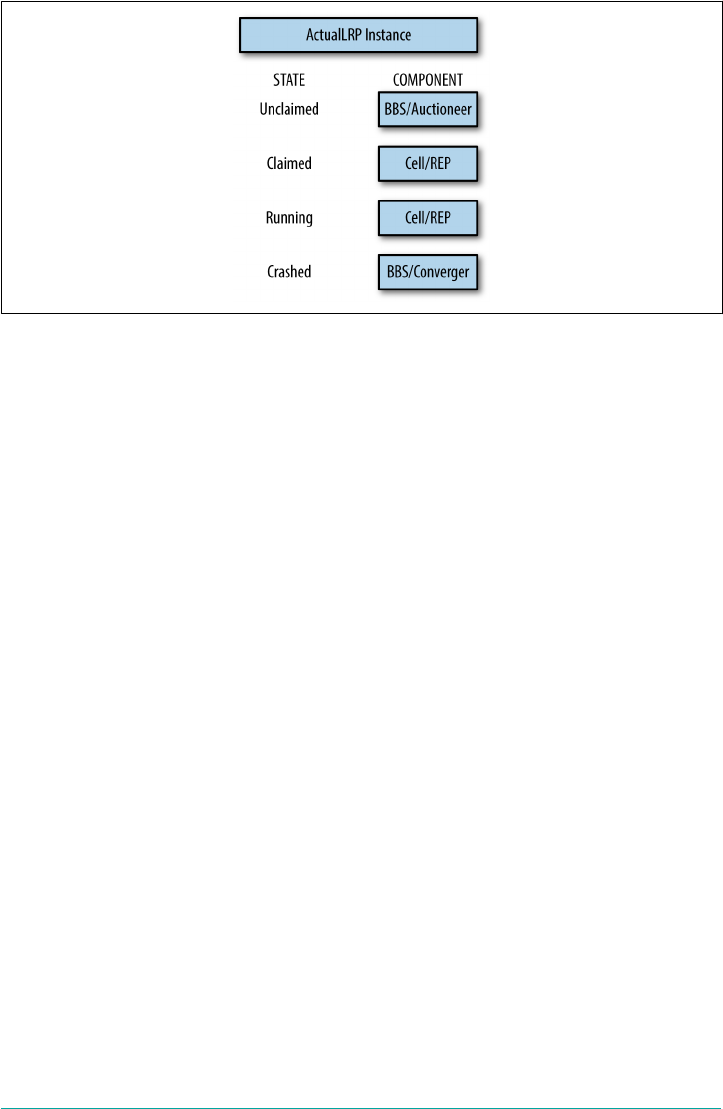

The Diego State Machine and Workload Life Cycles 107

The Application Life Cycle 109

Task Life Cycle 111

Additional Components and Concepts 111

The Route-Emitter 112

Consul 112

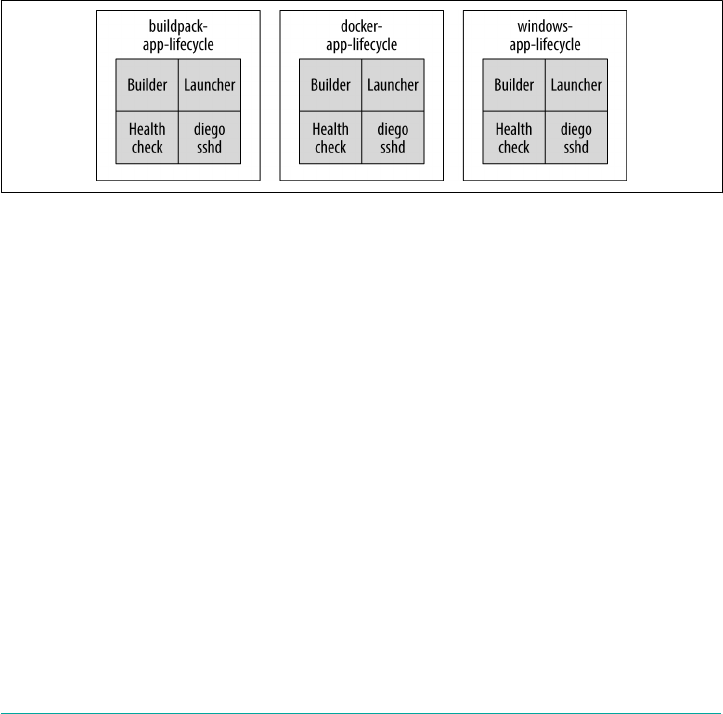

Application Life-Cycle Binaries 112

Putting It All Together 114

Summary 117

7. Routing Considerations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 119

Routing Primitives 119

Routes 120

Hostnames 122

Domains 123

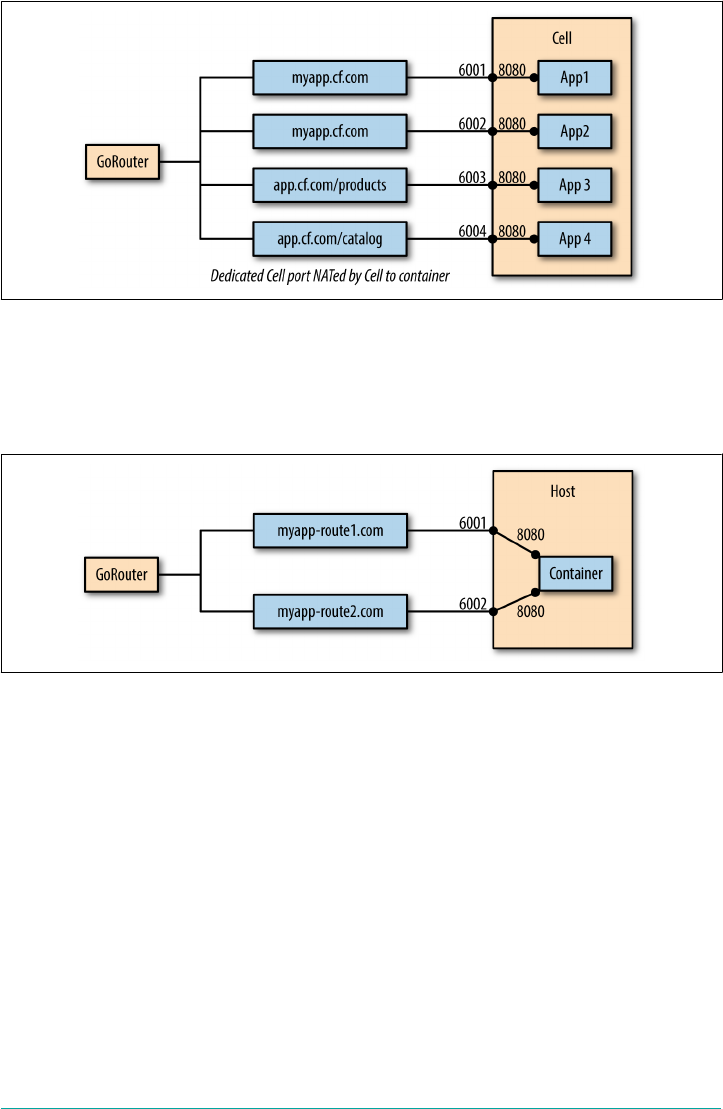

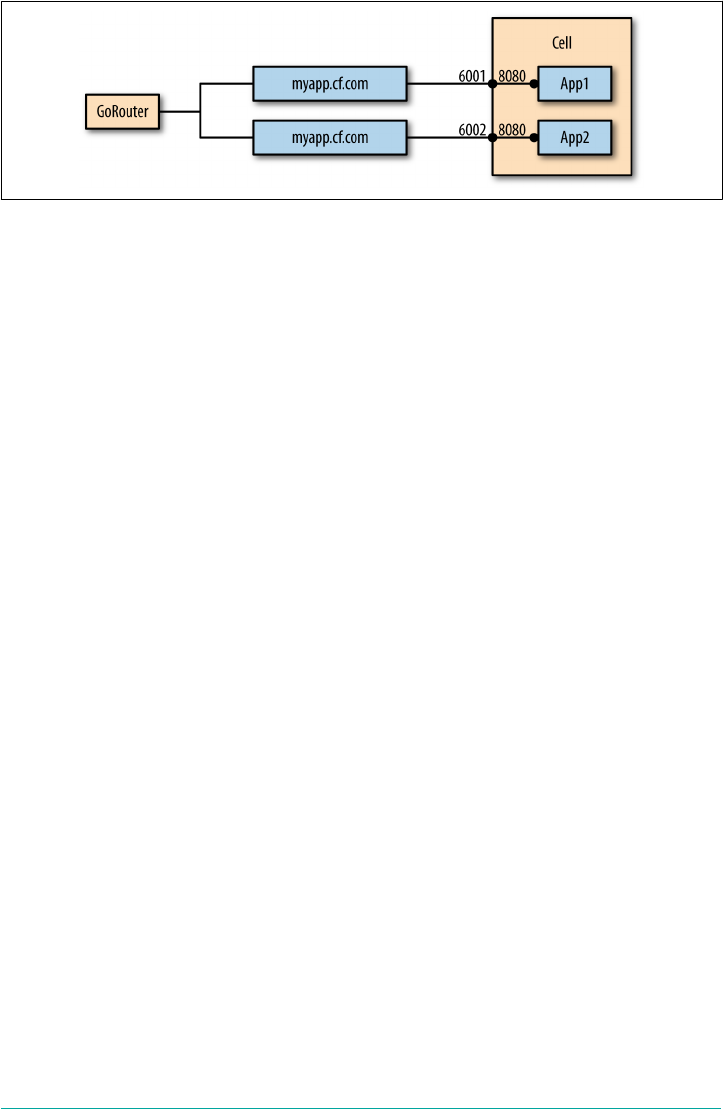

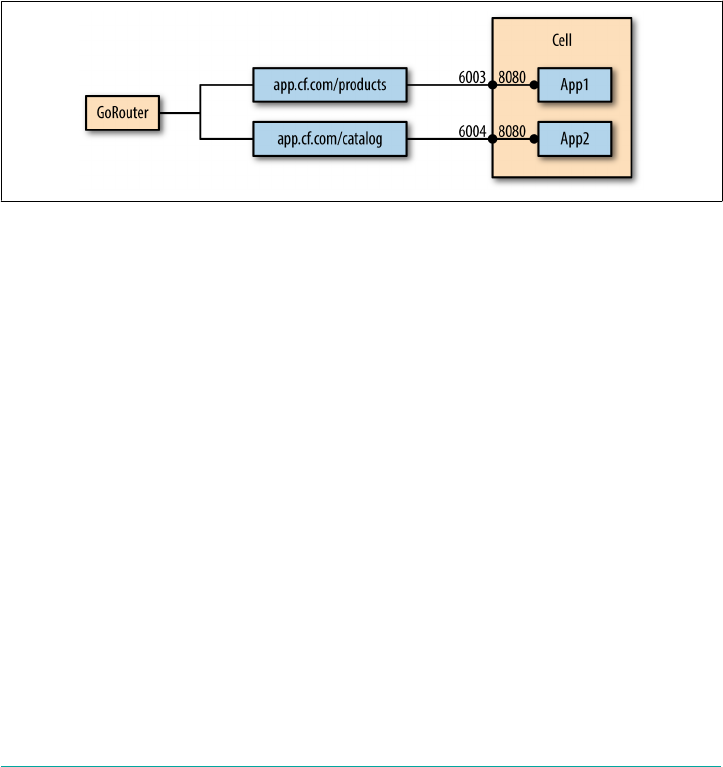

Context Path Routing 124

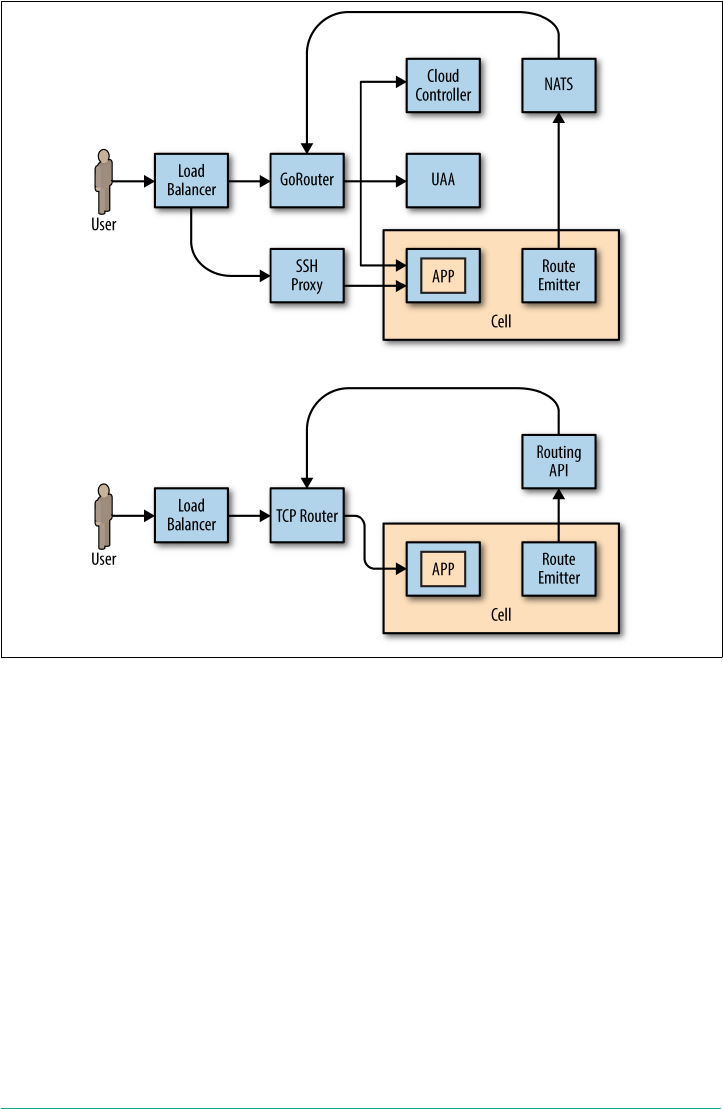

Routing Components Overview 125

Routing Flow 127

Route-Mapping Flow 127

Load Balancer Considerations 128

Setting Request Header Fields 128

WebSocket Upgrades 129

The PROXY Protocol 130

TLS Termination and IPSec 130

GoRouter Considerations 131

Routing Table 131

Router and Route High Availability 131

Router Instrumentation and Logging 132

Sticky Sessions 133

The TCPRouter 134

TCP Routing Management Plane 134

TCPRouter Configuration Steps 135

Route Services 136

Route Service Workflow 137

Route Service Use Cases 138

Summary 139

8. Containers, Containers, Containers. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 141

What Is a Container? 141

viii | Table of Contents

Container Fervor 143

Linux Containers 144

Namespaces 145

CGroups 148

Disk Quotas 148

Filesystems 148

Container Implementation in Cloud Foundry 150

Why Garden? 150

OCI and runC 151

Container Scale 153

Container Technologies (and the Orchestration Challenge) 153

Summary 154

9. Buildpacks and Docker. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 155

Why Buildpacks? 156

Why Docker? 157

Buildpacks Explained 158

Staging 159

Detect 160

Compile 160

Release 161

Buildpack Structure 162

Modifying Buildpacks 163

Overriding Buildpacks 163

Using Custom or Community Buildpacks 164

Forking Buildpacks 164

Restaging 164

Packaging and Dependencies 165

Buildpack and Dependency Pipelines 166

Summary 167

10. BOSH Concepts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 169

Release Engineering 169

Why BOSH? 170

The Cloud Provider Interface 172

Infrastructure as Code 172

Creating a BOSH Environment 174

Single-Node versus Distributed BOSH 174

BOSH Lite 175

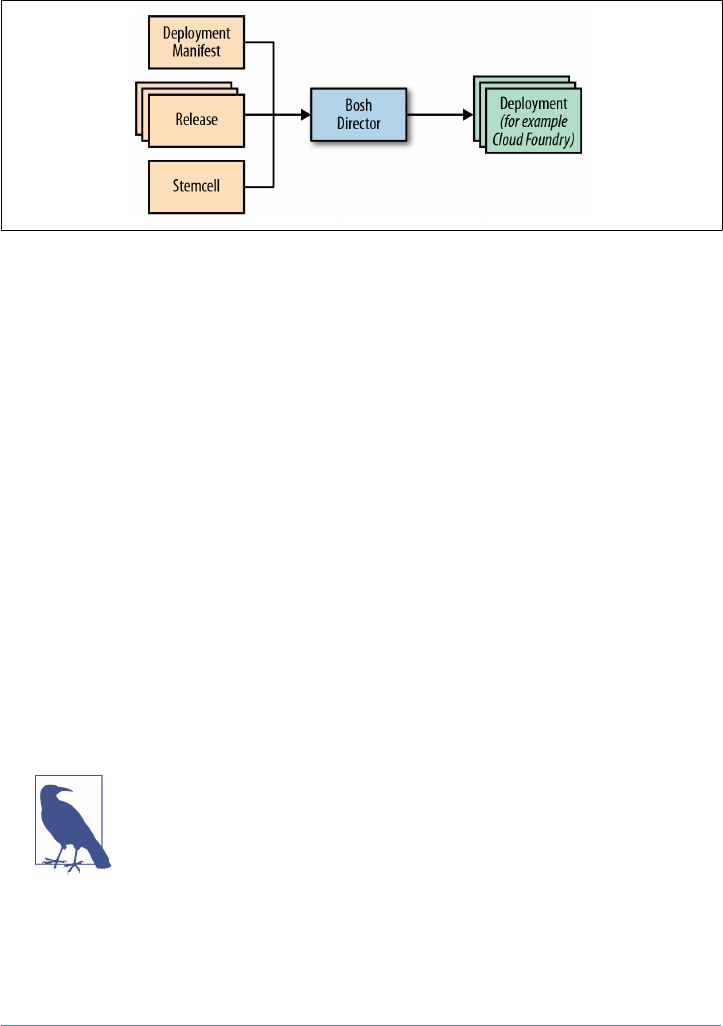

BOSH Top-Level Primitives 175

Stemcells 176

Releases 177

Table of Contents | ix

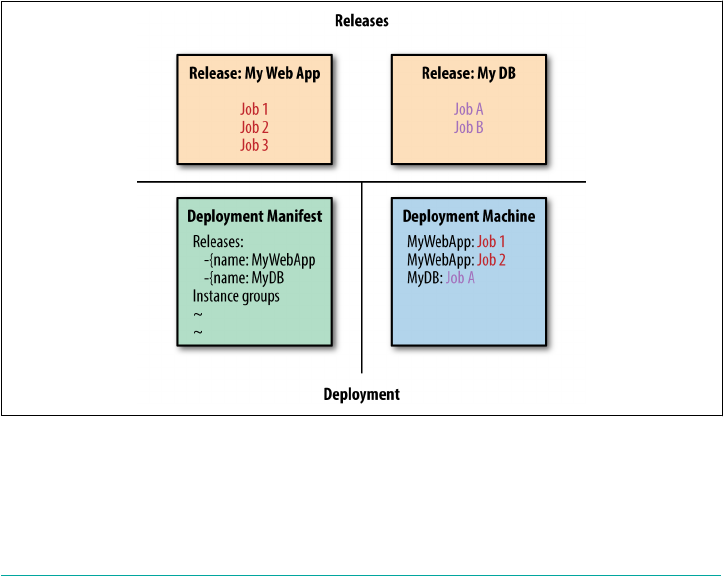

Deployments 179

BOSH 2.0 180

Cloud Configuration 180

BOSH Links 187

Orphaned Disks 188

Addons 188

Summary 188

11. BOSH Releases. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 191

Release Overview 191

Cloud Foundry BOSH Release 192

BOSH Director BOSH Release 192

Anatomy of a BOSH Release 192

Jobs 193

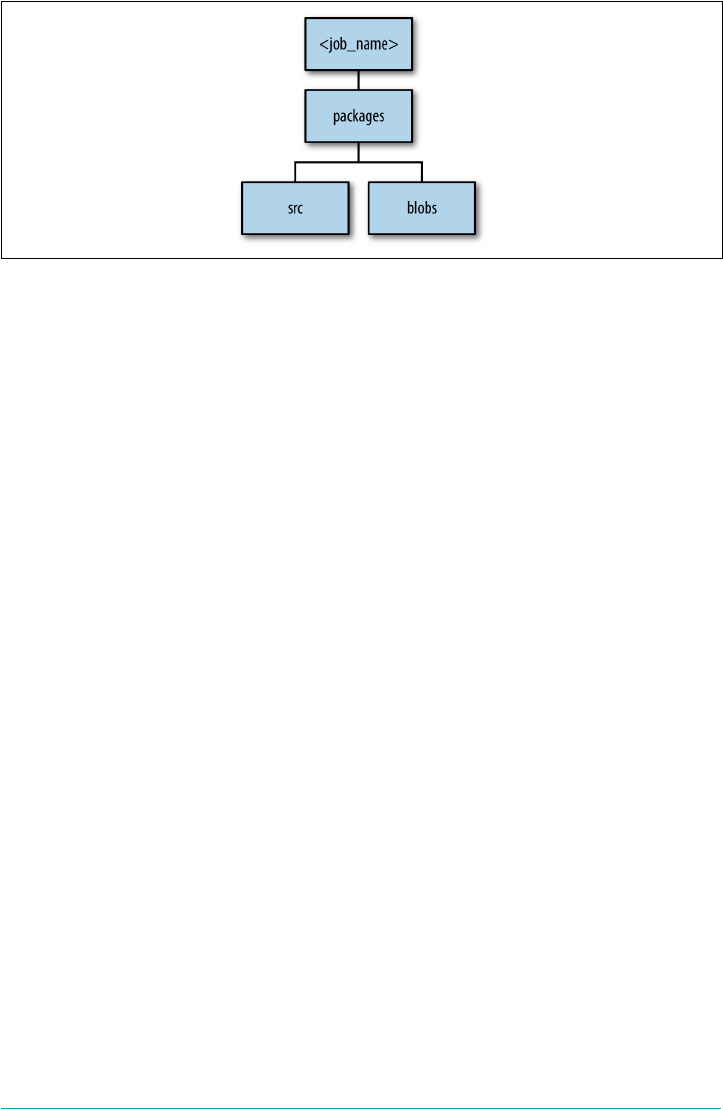

Packages 196

Src, Blobs, and Blobstores 197

Packaging a Release 199

Compilation VMs 200

Summary 200

12. BOSH Deployments. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 201

YAML Files 201

Understanding YAML Syntax 202

Deployment Manifests 204

Director UUID and Deployment Name 204

Release Names 205

Stemcell 205

Instance Groups 205

Properties 207

Update 208

Credentials 209

Summary 211

13. BOSH Components and Commands. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 213

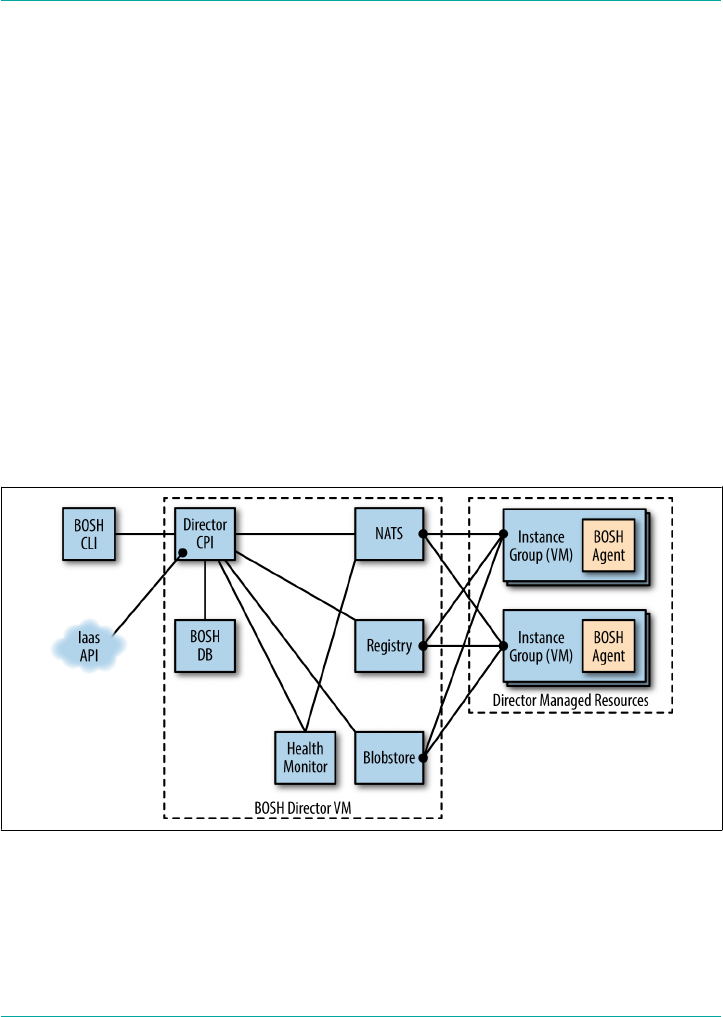

The BOSH Director 214

Director Blobstore 215

Director Task, Queue, and Workers 215

Director Database 215

Director Registry 215

BOSH Agent 215

Errand 216

The Command Line Interface 216

x | Table of Contents

The Cloud Provider Interface 216

Health Monitor 216

Resurrector 217

Message Bus (NATS) 217

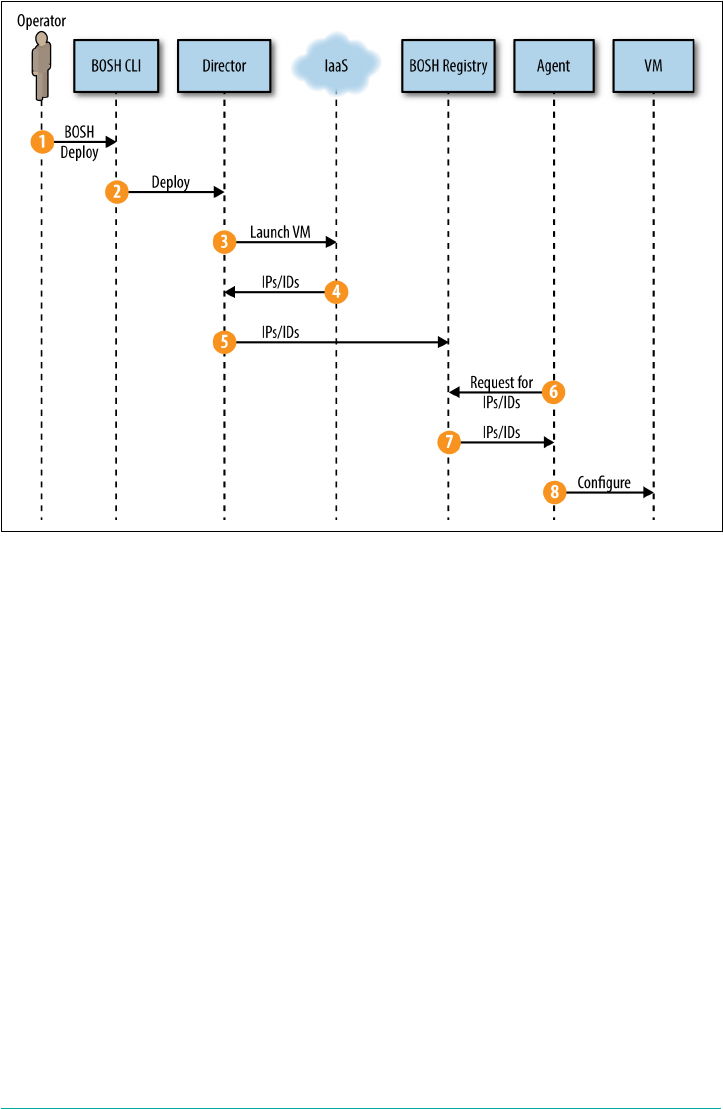

Creating a New VM 217

Disk Creation 219

Networking Definition 220

The BOSH CLI v2 221

Basic BOSH Commands 221

Summary 223

14. Debugging Cloud Foundry. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 225

Cloud Foundry Acceptance Tests 225

Logging 226

Typical Failure Scenarios 228

Configuration Failures 228

Infrastructure Failures 229

Release Job Process Failure 229

Scenario One: The App Is Not Reachable 230

Scenario Two: Network Address Translation Instance Deleted (Network

Failure) 231

Scenario Three: Security Group Misconfiguration That Blocks Ingress Traffic 234

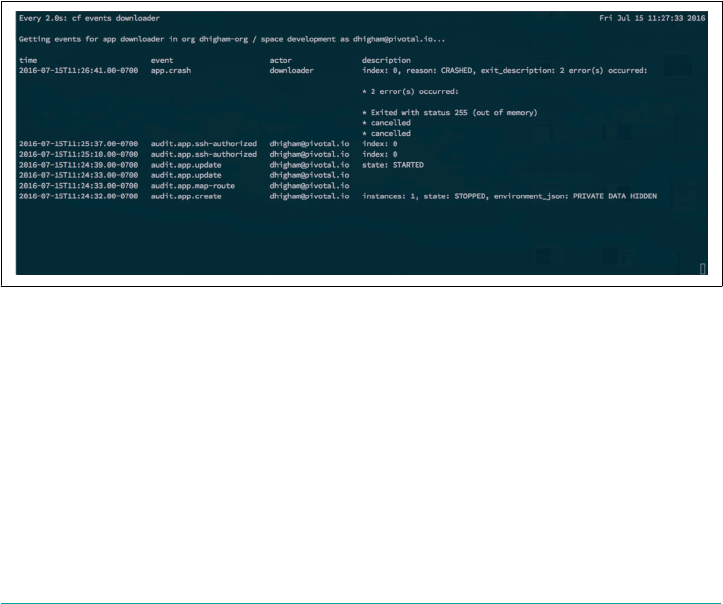

Scenario Four: Invoking High Memory Usage That Kills a Container 236

Scenario Five: Route Collision 241

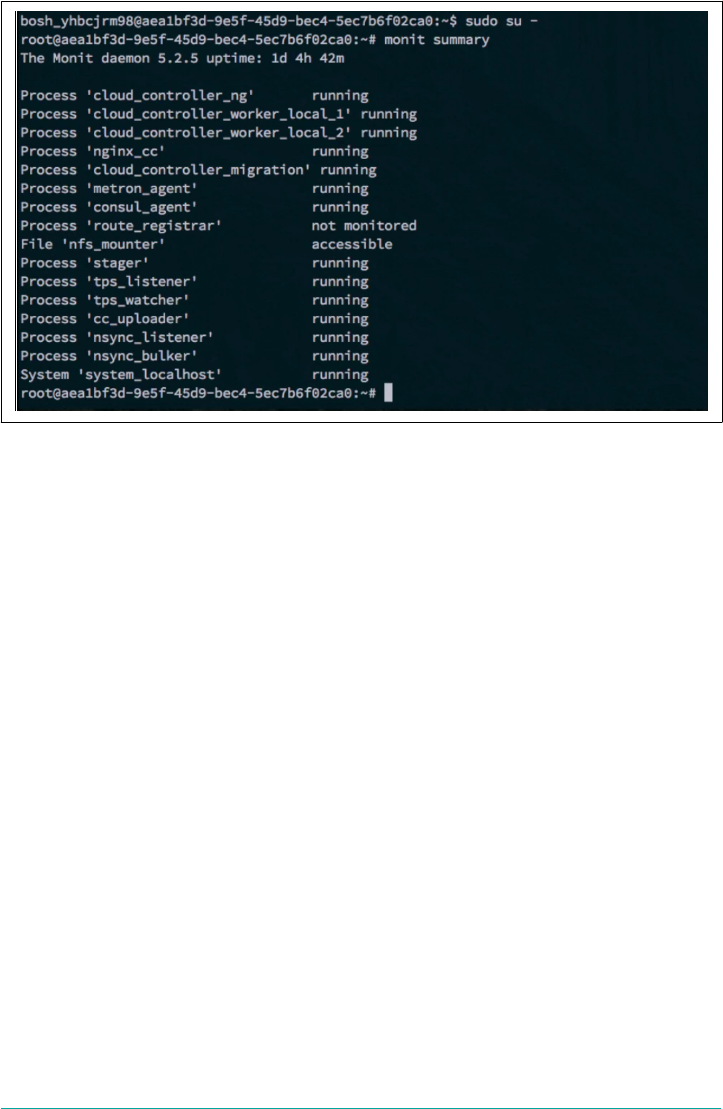

Scenario 6: Release Job Process Failures 245

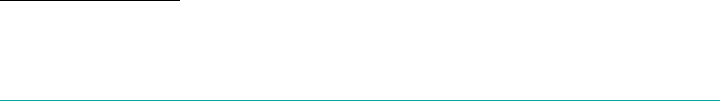

Scenario 7: Instance Group Failure 247

Summary 250

15. User Account and Authentication Management. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 251

Background Information 252

OAuth 2.0 252

UAA Documentation 252

UAA Release 253

UAA Responsibilities 253

Securing Cloud Foundry Components and API Endpoints 253

Securing Service Access for Apps 254

UAA Architecture and Configuration Within Cloud Foundry 255

Instance Groups Governed by the UAA 255

UAA Instance Groups 255

UAA Database 256

UAA Runtime Components 256

UAA Logging and Metrics 256

Table of Contents | xi

Keys, Tokens, and Certificate Rotation 257

User Import 258

Roles and Scopes 259

Scopes 259

Roles 259

Summary 261

16. Designing for Resilience, Planning for Disaster. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 263

High Availability Considerations 263

Extending Cloud Foundry’s Built-In Resiliency 265

Resiliency Through Multiple Cloud Foundry Deployments 266

Resiliency Through Pipelines 267

Data Consistency Through Services 267

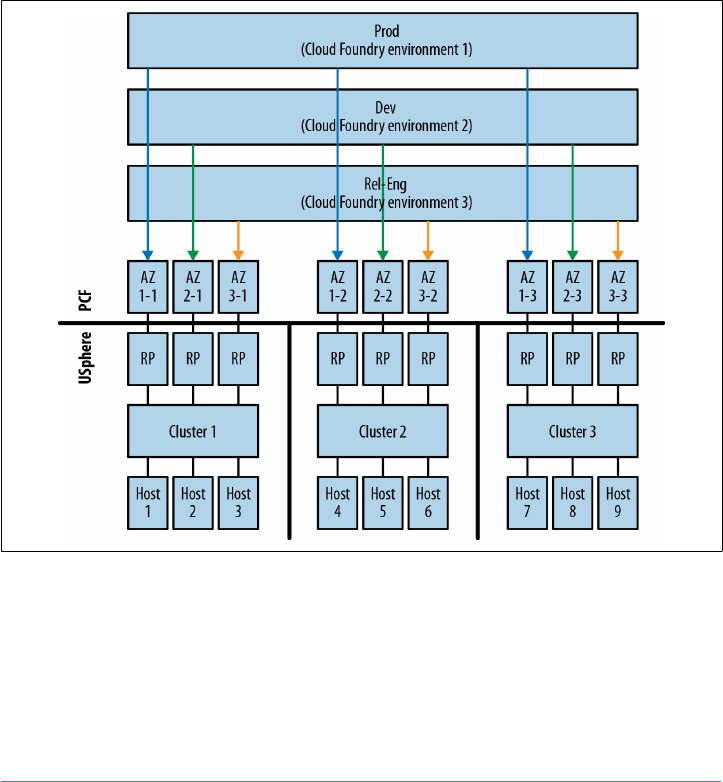

HA IaaS Configuration 268

AWS Failure Boundaries 268

vCenter Failure Boundaries 269

Backup and Restore 272

Restoring BOSH 273

Bringing Back Cloud Foundry 274

Validating Platform Integrity in Production 274

Start with a Sandbox 275

Production Verification Testing 275

Summary 276

17. Cloud Foundry Roadmap. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 277

v3 API 277

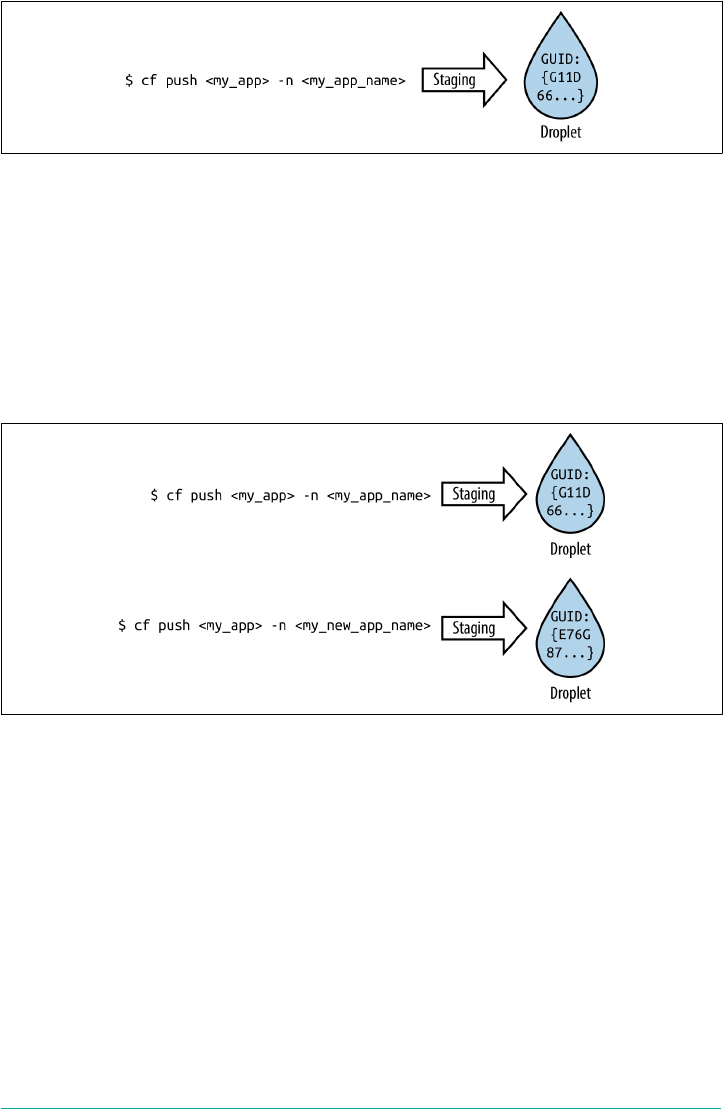

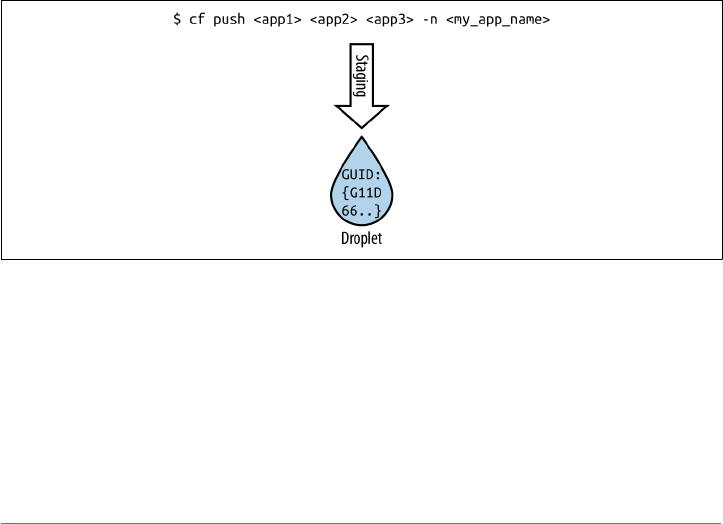

Multiple Droplets per App 277

Multiple Apps per Droplet (Process Types) 280

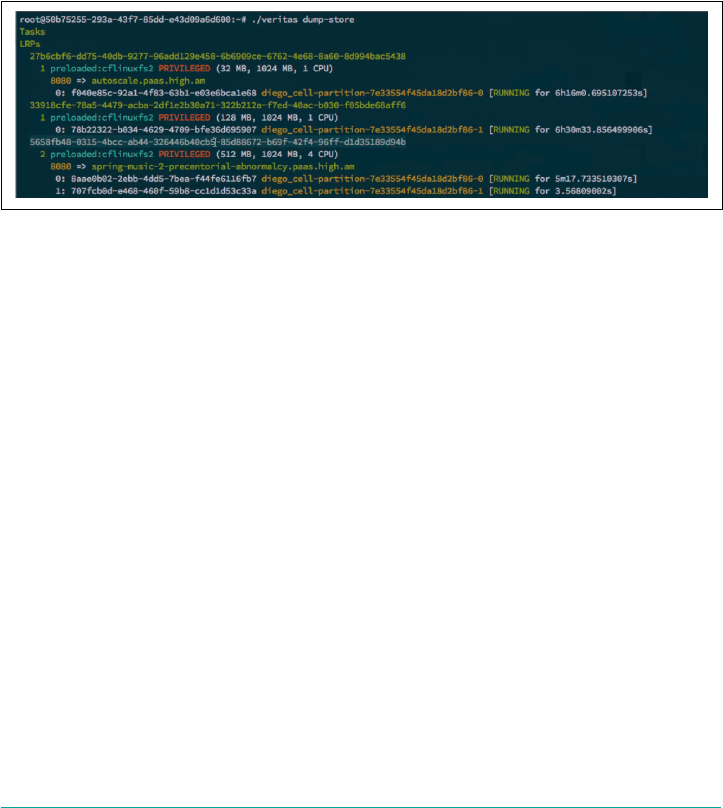

Tasks 280

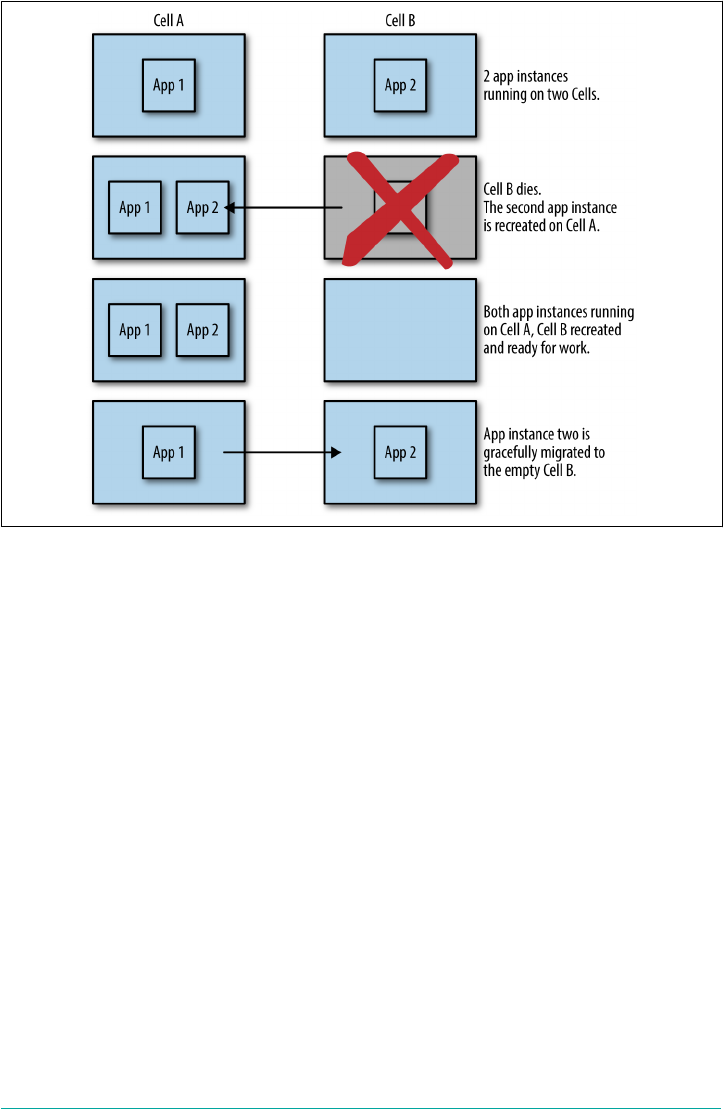

Diego Scheduling 281

Cell Rebalancing 281

Boulders 282

Tracing 283

Containers 283

Network Shaping 283

Container Snapshots 283

Container-to-Container Networking 283

Traffic Resiliency 284

Buildpacks and Staging 284

Multibuildpacks 285

Post-Staging Policy or Step 285

Compiler-Less Rootfs and Stemcells 285

xii | Table of Contents

Foreword

When we think of transformative innovation, it’s easy for our minds to grasp the tan‐

gible and overt technologies—the television, the personal computer, the smartphone.

These inventions are visible, material commodities that serve a physical purpose in

our lives. These technologies start small and then eventually gain widespread adop‐

tion, at which point they change the way we interact and engage with the technology

—and often with the world around us. When we talk about strides in technology to

most people, these are the gadgets they envision: separate objects that can be picked

up, plugged in, and turned off.

But for those of us who are quietly enabling digital transformation across multiple

industries, we know what innovation can look like. It can be invisible and intangible

—the velocity behind a high dive into the pool. The operators and developers of the

world no longer reside in the technology aisle. You are leading the change at every

kind of company across every industry. It’s one thing to demonstrate how a printing

press increases the pace of printing papers exponentially. It’s another thing entirely to

explain how a platform that is not visible has the ability to transform a company’s

ability to compete in a quickly changing marketplace. This book is a resource for you

as you lead the digital revolution within your organization.

This is undoubtedly a technical book devoted to the underlying concepts of Cloud

Foundry and how it works, but it is emblematic of something larger at play. The

author, Duncan Winn, has spent a career helping customers achieve more with tech‐

nology. Most recently, at Pivotal, he helped customers implement, deploy, and get

apps up and running on Cloud Foundry. He saw such incredible results that he took

it upon himself to begin the project of cataloging, explaining, and evangelizing the

technology. Duncan saw the monumental benefit of Cloud Foundry to everyone,

from the business itself right down to the developer. He saw how cloud-native appli‐

cation, architecture, and development are driving and accelerating digital innovation,

and that Cloud Foundry was the invisible platform that could take this process from

days to minutes.

Foreword | xv

Cloud Foundry is dedicated to improving the ability of developers to code and deploy

new applications. The collaborative nature of this open source project facilitates

cooperative, interactive creation, driving innovation. A platform that expedites the

deployment of applications does so with the understanding that an iterative approach

to development enables a user-first mentality. Cloud Foundry’s support of continu‐

ous delivery empowers developers to iterate applications based on user feedback,

eliminating the need for late-night adjustments during limited change windows. It

minimizes risk around release failure because incremental change is easier to perform

and less drastic. In short, Cloud Foundry makes a developer’s job faster and easier.

Cloud Foundry is the standard for application platforms with the noble vision of uni‐

fying the market for enterprise software. Cloud Foundry: The Definitive Guide is an

integral rulebook for building the software of the future and maintaining the

momentum of digital transformation across industries. The power of open source is

self-evident in the potency of Cloud Foundry, with a commitment to sharing and

continuous innovation.

It is my hope that you use this book as your digital transformation encyclopedia.

Read it, revisit it, learn from it, and challenge it. Cloud Foundry is for you.

— Abby Kearns

Executive Director of

Cloud Foundry Foundation

xvi | Foreword

Preface

Cloud Foundry is a platform that helps you develop and deploy applications and

tasks with velocity. Velocity, as a vector quantity, is different from raw speed because

it is direction aware. In our case, direction is based on user feedback. Application

velocity allows you to adopt an iterative approach to development through repeatedly

gaining fast feedback from end users. Ultimately, this approach allows you to align

your products, user requirements, and expectations. This book covers Cloud Foun‐

dry’s technical concepts, providing a breakdown of the various platform components

and how they interrelate. It also will walk you through a typical setup of BOSH (a

release-engineering tool chain) and Cloud Foundry, and unpack the broader consid‐

erations of adopting Cloud Foundry for enterprise workloads.

Like all distributed systems, Cloud Foundry involves various levels of complexity.

Complexity is fine if it is well defined. Cloud Foundry does an excellent job defining

its internal complexity by providing explicit boundaries between each of its compo‐

nents. For the most part, this removes the need for the platform operator to deal with

Cloud Foundry’s internal complexity. However, there are still many other factors to

consider when running Cloud Foundry; for example, choosing your underlying

infrastructure, defining your networking architecture, and establishing your resil‐

iency requirements. These concerns are environmental, relating to the ecosystem in

which Cloud Foundry resides. Getting these concerns right is essential for reliably

handling business critical workloads. Cloud Foundry: The Definitive Guide aims to

tackle these environmental considerations and decision points that are required for

configuring and running Cloud Foundry at scale.

In addition to unpacking the various considerations required for running the tech‐

nology, this book also explores the concepts surrounding Cloud Foundry. My goal is

to provide the necessary content for understanding the following:

• Cloud Foundry’s underlying concepts

Preface | xvii

•How Cloud Foundry works, including the flow of communication between the

distributed components

•How to begin deploying BOSH and Cloud Foundry, including the key configura‐

tion decision points

An understanding of how Cloud Foundry works is vital if you are running business-

critical applications, services, and workloads on the platform. Understanding the role

of each component along with the flow of communication is vital for troubleshooting

platform issues.

Who Should Read This Book

My hope is that you are reading this book because you already believe Cloud Foundry

will enable you to deliver software with higher velocity. If you are unfamiliar with the

high-level concepts of Cloud Foundry and what it enables you to achieve, I suggest

(shameless plug, I know) that you begin by reading Cloud Foundry: The Cloud-Native

Platform. The purpose of that book is to provide an understanding of why you should

care about using a platform to achieve application velocity.

This book is primarily aimed at Cloud Foundry operators who are responsible for

installing, configuring, and operating Cloud Foundry. Cloud Foundry significantly

simplifies the operational concerns of running applications and services. For exam‐

ple, imagine not having to provision infrastructure for every new project, and sys‐

tematically repaving all your deployed machines via rolling upgrades with zero

downtime. Empowering developers to deliver software with significantly less com‐

plexity and overhead is powerful. However, configuring and running Cloud Foundry

itself can involve some complexity.

The team responsible for operating Cloud Foundry is often known as the platform

operations team. This team’s responsibility includes deploying and operating Cloud

Foundry so that application developers can take advantage of its capabilities.

Typical expertise required for the platform operations team is very broad. A list of

required roles and skills is discussed at length in “Team Structure: Platform Opera‐

tions for the Enterprise” on page 44. The list is diverse because Cloud Foundry lever‐

ages different technology layers across your infrastructure. Many platform operators

have expertise with a subset of these disciplines. The challenge of adopting technol‐

ogy that touches so many varied layers is understanding each layer and how it should

be configured to interact with the others. An additional objective of this book is

therefore to enable platform operators to quickly expand their areas of expertise, and

to gain understanding of the salient points of any unfamiliar territory.

The approaches in this text come from real experience with numerous companies

from many different industries. The companies I have worked with all have

xviii | Preface

embarked on a journey toward establishing Cloud Foundry at scale. If you are look‐

ing for a way of introducing Cloud Foundry into your development and deployment

pipeline, this book is for you.

Cloud Foundry also makes developers’ lives easier, abstracting away the middleware,

OS, and infrastructure concerns so that they can focus on just their application and

desired backing services. Using Cloud Foundry is straightforward and sufficiently

covered in the Cloud Foundry documentation. Therefore, developer usage of Cloud

Foundry is not a focus of this book. With that said, many developers I have worked

with find Cloud Foundry’s technology interesting and often desire a deeper under‐

standing of the operational aspects detailed in this book.

This is a technically focused book intended for platform operators. Therefore, you

should have some of the following basic system administrative skills:

• Know how to open a terminal window in your OS of choice

• Know how to install software tools such as command-line interfaces (CLIs)

•Know how to use secure shell (SSH) and work with virtual machines (VMs)

•Know how to work with source code from GitHub by both downloading and

installing it (Mac users—Homebrew should be your go-to tool of choice here)

When I talk about specific Cloud Foundry tools such as BOSH, I will link you to an

appropriate download location (often a GitHub repository). You can then follow the

instructions in the linked repositories. For Mac users, you can also install most Cloud

Foundry tools via Homebrew.

Why I Wrote This Book

As a platform architect for Pivotal, I have worked with numerous companies from

various industries to help them install and configure Cloud Foundry. Like most plat‐

form operators, I began with knowledge of a subset of the technology; everything else

I learned on the job. In my early days with Cloud Foundry, there are two key things

that would have helped me:

•An understanding of the critical configuration considerations for both the plat‐

form and the underlying distributed infrastructure

•A reference architecture detailing the rationale and trade-offs for all implementa‐

tion decisions

To address the first point, Cloud Foundry has forged a fantastic collaborative com‐

munity from numerous companies and industries. I have been fortunate to work

alongside an incredibly talented team with a diverse skill set, both within Pivotal and

from other companies within the Cloud Foundry community. The reason I wrote this

Preface | xix

book is to document the best practices and considerations I have learned through

working with Cloud Foundry.

Regarding the second point, as a consultant working across numerous industries, I

see the same issues and questions coming up with every new engagement. It is there‐

fore my hope that this book will explain the basic reference architecture for Cloud

Foundry deployments, including detailing the rationale and trade-offs for all imple‐

mentation decisions.

A Word on Cloud-Native Platforms

Cloud Foundry is a cloud-native platform. Such platforms are designed to do more

for you so that you can focus on what is important: delivering applications that

directly affect your business. Specifically, cloud-native platforms are designed to do

more (including reliably and predictably running and scaling applications) on top of

potentially unreliable cloud-based infrastructure. If you are unfamiliar with the high-

level concepts of Cloud Foundry and what it enables you to achieve, you should begin

by reading Cloud Foundry: The Cloud-Native Platform.

Online Resources

There are some great online references that you should familiarize yourself with as

you embark on your Cloud Foundry journey:

•The Cloud Foundry Foundation

•Bosh.io

• The cf-deployment GitHub repository

• Cloud Foundry’s continuous integration tool Concourse

Conventions Used in This Book

The following typographical conventions are used in this book:

Italics

Indicates new terms, URLs, email addresses, filenames, and file extensions.

Constant width

Used for program listings, as well as within paragraphs to refer to program ele‐

ments such as variable or function names, databases, data types, environment

variables, statements, and keywords.

Constant width bold

Shows commands or other text that should be typed verbatim by the user.

xx | Preface

Constant width italics

Shows text that should be replaced with user-supplied values or by values deter‐

mined by context.

This icon signifies a tip, suggestion, or general note.

This icon indicates a warning or caution.

This icon indicates a item to take note of.

Sidebar

Sidebars are used to provide some additional context to the main text.

Command prompts always start with $, for example:

$ cf push

O’Reilly Safari

Safari (formerly Safari Books Online) is a membership-based

training and reference platform for enterprise, government,

educators, and individuals.

Members have access to thousands of books, training videos, Learning Paths, interac‐

tive tutorials, and curated playlists from over 250 publishers, including O’Reilly

Media, Harvard Business Review, Prentice Hall Professional, Addison-Wesley Pro‐

fessional, Microsoft Press, Sams, Que, Peachpit Press, Adobe, Focal Press, Cisco

Press, John Wiley & Sons, Syngress, Morgan Kaufmann, IBM Redbooks, Packt,

Adobe Press, FT Press, Apress, Manning, New Riders, McGraw-Hill, Jones & Bartlett,

and Course Technology, among others.

Preface | xxi

For more information, please visit http://oreilly.com/safari.

How to Contact Us

Please address comments and questions concerning this book to the publisher:

O’Reilly Media, Inc.

1005 Gravenstein Highway North

Sebastopol, CA 95472

800-998-9938 (in the United States or Canada)

707-829-0515 (international or local)

707-829-0104 (fax)

To comment or ask technical questions about this book, send email to bookques‐

tions@oreilly.com.

For more information about our books, courses, conferences, and news, see our web‐

site at http://www.oreilly.com.

Find us on Facebook: http://facebook.com/oreilly

Follow us on Twitter: http://twitter.com/oreillymedia

Watch us on YouTube: http://www.youtube.com/oreillymedia

Acknowledgments

One of the things I love about Cloud Foundry is its community. It is genuinely col‐

laborative, and many people within the community have invested both time and

expertise helping to shape the content and accuracy of this book. A brief section is

not enough to encapsulate the extent to which my friends, family, and colleagues

have helped me, but I will most certainly mention their names. Due to the breadth of

support and the time it took to write this book, I have a sinking feeling that I’ve

missed someone really important, in which case I apologize.

Various product managers and subject matter experts were incredibly generous with

their time, both upfront to go deep on specific topics, and later on reviewing the rele‐

vant sections at length. In chapter order, I would like to thank: David Sabeti and Evan

Farrar on Chapter 5; Eric Malm and Brandon Shroyer on Diego; Shannon Coen on

Routing; Will Pragnell, Glyn Normington, and Julian Friedman on Containers; Ben

Hale on Buildpacks; Dmitriy Kalinin on BOSH; Dan Higham on Debugging; Allen

Duet and Mark Alston on Logging; Sree Tummidi and Filip Hanik on UAA; Haydon

Ryan and Sean Keery on HA and DR; and Dieu Cao on the final Summary.

Numerous colleagues provided incredibly valuable input and tech reviews, including

Matthew Stine, James Bayer, Onsi Fakhouri, Robert Mee, Graham Winn, Amit

xxii | Preface

Gupta, Ramiro Salas, Ford Donald, Merlin Glynn, Shaozhen Ding, John Calabrese,

Caleb Washburn, Ian Zink, Keith Strini, Shawn Neal, Rohit Kelapure, Dave Wallraff,

David Malone, Christopher Umbel, Rick Farmer, Stu Radnidge, Stuart Charlton, and

Jim Park, Alex Ley, Daniel Jones, and Dr Nick Williams—along with many folks at

Stark and Wayne.

Most of the material in this book was derived from experiences in the trenches, and

there are many people who have toughed it out in those trenches alongside me. Sev‐

eral of the people already mentioned belong in this category, but in addition, I would

like to thank Raghvender Arni, Mark Ruesink, Dino Cicciarelli, Joe Fitzgerald, and

Matt Russell for their superb guidance and for establishing excellent teams in which I

had the good fortune to work.

Thanks also to my good friend Vinodini Murugesan for her excellent editing. Special

thanks to my mother, who always invested in my education, and to my father, who

provided tireless coaching and feedback throughout my career; you both inspired me

to do what I love to the best of my ability. And, finally, and most importantly, thanks

to my wonderful wife, Tanya Winn, for her endless understanding and support in all

my endeavors.

Preface | xxiii

CHAPTER 1

The Cloud-Native Platform

Cloud Foundry is a platform for running applications, tasks, and services. Its purpose

is to change the way applications, tasks, and services are deployed and run by signifi‐

cantly reducing the develop-to-deployment cycle time.

As a cloud-native platform, Cloud Foundry directly uses cloud-based infrastructure

so that applications running on the platform can be infrastructure unaware. Cloud

Foundry provides a contract between itself and your cloud-native apps to run them

predictably and reliably, even in the face of unreliable infrastructure.

If you need a brief summary of the benefits of the Cloud Foundry platform, this

chapter is for you. Otherwise, feel free to jump ahead to Chapter 2.

Why You Need a Cloud-Native Platform

To understand the business reasons for using Cloud Foundry, I suggest that you

begin by reading Cloud Foundry: The Cloud-Native Platform, which discusses the

value of Cloud Foundry and explores its overall purpose from a business perspective.

Cloud Foundry is an “opinionated” (more on this later in the chapter), structured

platform that imposes a strict contract between the following:

• The infrastructure layer underpinning it

• The applications and services it supports

Cloud-native platforms do far more than provide developers self-service resources

through abstracting infrastructure. Chapter 2 discusses at length their inbuilt fea‐

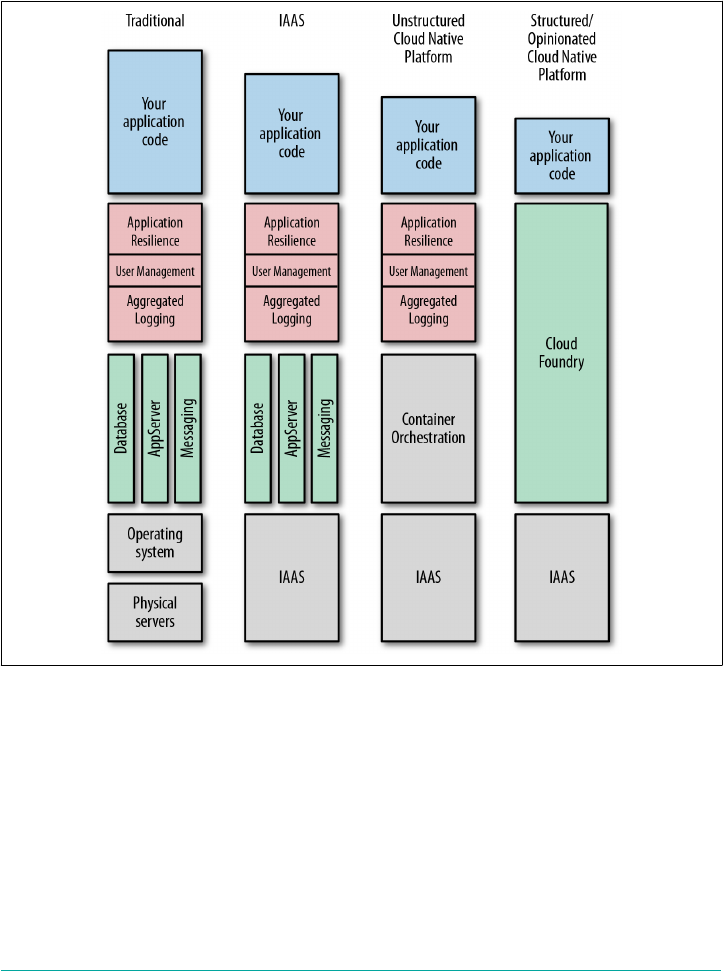

tures, such as resiliency, log aggregation, user management, and security. Figure 1-1

shows a progression from traditional infrastructure to Infrastructure as a Service

(IaaS) and on to cloud-native platforms. Through each phase of evolution, the value

1

line rises due to increased abstraction. Your responsibility and requirement to config‐

ure various pieces of the software, middleware, and infrastructure stack in support of

your application code diminish as the value line rises. The key is that cloud-native

platforms are designed to do more for you so that you can focus on delivering appli‐

cations with business value.

Figure 1-1. Cloud-native platform evolution

Cloud-Native Platform Concepts

In the Preface, I pointed out that Cloud Foundry’s focus is not so much what a plat‐

form is or what it does, but rather what it enables you to achieve. It has the potential

to make the software build, test, deploy, and scale cycle significantly faster. It removes

many of the hurdles involved in deploying software, making it possible for you to

release software at will.

Specifically, here’s what the Cloud Foundry platform offers:

2 | Chapter 1: The Cloud-Native Platform

Services as a higher level of abstraction above infrastructure

Cloud Foundry provides a self-service mechanism for the on-demand deploy‐

ment of applications bound to an array of provisioned middleware and routing

services. This benefit removes the management overhead of both the middleware

and infrastructure layer from the developer, significantly reducing the

development-to-deployment time.

Containers

Cloud Foundry runs all deployed applications in containers. You can deploy

applications as container images or as standalone apps containerized by Cloud

Foundry. This provides flexibility. Companies already established with Docker

can deploy existing Docker images to Cloud Foundry. However, containerizing

applications on the user’s behalf offers additional productivity and operational

benefits because the resulting container image is built from known and vetted

platform components. This approach allows you to run your vulnerability scans

against your trusted artifacts once per update. From this point, only the applica‐

tion source code requires additional vulnerability scanning on a per deployment

basis. Essentially, there is less to check on a per deployment basis because all of

your supporting artifacts have already been vetted.

Agile and automation

You can use Cloud Foundry as part of a CI/CD pipeline to provision environ‐

ments and services on demand as the application moves through the pipeline to a

production-ready state. This helps satisfy the key Agile requirement of getting

code into the hands of end users when required.

Cultural shift to DevOps

Cross-cutting concerns is a well-understood concept by developers. Adopting

Cloud Foundry is ideally accompanied by a cultural shift to DevOps, meaning

that you need to break down traditional walls, team silos, and ticket-based hand-

offs to get the most benefit from it.

Microservices support

Cloud Foundry supports microservices through providing mechanisms for inte‐

grating and coordinating loosely coupled services. To realize the benefits of

microservices, a platform is required to provide additional supporting capabili‐

ties; for example, Cloud Foundry provides applications with capabilities such as

built-in resilience, application authentication, and aggregated logging.

Cloud-native application support

Cloud Foundry provides a contract against which applications can be developed.

This contract makes doing the right thing simple and will result in better applica‐

tion performance, management, and resilience.

Cloud-Native Platform Concepts | 3

Not all cloud-native platforms are the same. Some are self-built and pieced together

from various components; others are black-boxed and completely proprietary. The

Cloud Foundry cloud-native platform has three defining characteristics: it is struc‐

tured, opinionated, and open. I’ll examine each of these traits in the following sec‐

tions.

The Structured Platform

Within the platform space, two distinct architectural patterns have emerged: struc‐

tured and unstructured:

•Structured platforms provide built-in capabilities and integration points for key

concerns such as enterprise-wide user management, security, and compliance.

With these kinds of platforms, everything you need to run your applications

should be provided in a repeatable way, regardless of what infrastructure you run

on. Cloud Foundry is a perfect example of a structured platform.

•Unstructured platforms have the flexibility to define a bespoke solution at a

granular level. An example of an unstructured platform would involve a “build

your own platform” approach with a mix of cloud-provided services and home‐

grown tools, assembled for an individual company.

Structured platforms focus on simplifying the overall operational model. Rather than

integrating, operating, and maintaining numerous individual components, the plat‐

form operator just deals with the one platform. Structured platforms remove all the

undifferentiated heavy lifting: tasks that must be done—for example, service discov‐

ery or application placement—but that are not directly related to revenue-generating

software.

Although structured platforms are often used for building new cloud-native applica‐

tions, they also support legacy application integration where it makes sense to do so,

allowing for a broader mix of workloads than traditionally anticipated. The struc‐

tured approach provides a much faster “getting started” experience with lower overall

effort required to operate and maintain the environment.

The Opinionated Platform

When you look at successful software, the greatest and most widely adopted technol‐

ogies are incredibly opinionated. What this means is that they are built on, and

adhere to, a set of well-defined principles employing best practices. They are proven

to work in a practical way and reflect how things can and should be done when not

constrained by the baggage of technical debt. Opinions produce contracts to ensure

applications are constrained to do the right thing.

4 | Chapter 1: The Cloud-Native Platform

Platforms are opinionated because they make specific assumptions and optimizations

to remove complexity and pain from the user. Opinionated platforms are designed to

be consistent across environments, with every feature working as designed out of the

box. For example, the Cloud Foundry platform provides the same user experience

when deployed over different IaaS layers and the same developer experience regard‐

less of the application language. Opinionated platforms such as Cloud Foundry can

still be configurable and extended, but not to the extent that the nature of the plat‐

form changes. Platforms should have opinions on how your software is deployed,

run, and scaled, but not where an application is deployed; this means that, with

respect to infrastructure choice, applications should run anywhere.

The Open Platform

Cloud Foundry is an open platform. It is open on three axes:

•It allows a choice of IaaS layer to underpin it (Google Cloud Platform [GCP],

Amazon Web Services [AWS], Microsoft Azure, VMware vSphere, OpenStack,

etc.).

•It allows for a number of different developer frameworks, polyglot languages,

and application services (Ruby, Go, Spring, etc.).

•It is open-sourced under an Apache 2 license and governed by a multi-

organization foundation.

Closed platforms can be proprietary and often focus on a specific problem. They

might support only a single infrastructure, language, or use case. Open platforms

offer choice where it matters.

Summary

Cloud Foundry is an opinionated, structured, and open platform. As such, it is:

•built on, and adheres to, a set of well-defined principles employing best practices.

•constrained to do the right thing for your application, based on defined con‐

tracts.

•consistent across different infrastructure/cloud environments.

•configurable and extendable, but not to the degree that the nature of the platform

changes.

For the developer, Cloud Foundry provides a fast “on rails” development and deploy‐

ment experience. For the operator, it reduces operational effort through providing

built-in capabilities and integration points for key enterprise concerns such as user

management, security, and self-healing.

The Open Platform | 5

CHAPTER 2

Concepts

This chapter explains the core concepts underpinning Cloud Foundry. Understand‐

ing these concepts paints a complete picture of why and how you should use the plat‐

form. These concepts include the need to deal with undifferentiated heavy lifting and

why cloud-based operating systems are essential in protecting your cloud investment.

This chapter also touches on the philosophical perspectives behind Cloud Foundry

with its opinionated do more approach. Operational aspects, including release engi‐

neering through BOSH, and built-in resilience and fault tolerance are also intro‐

duced. Finally, some of the core capabilities of the platform beyond container

orchestration are introduced, including the aggregated streaming of logs and metrics

and the user access and authentication (UAA) management.

Undifferentiated Heavy Lifting

Cloud Foundry is a platform for running applications and one-off tasks. The essence

of Cloud Foundry is to provide companies with the speed, simplicity, and control

they need to develop and deploy applications. It achieves this by undertaking many of

the burdensome boilerplate responsibilities associated with delivering software.

These types of responsibilities are referred to as undifferentiated heavy lifting, tasks

that must be done—for example, container orchestration or application placement—

but that are not directly related to the development of revenue-generating software.

The following are some examples of undifferentiated heavy lifting:

•Provisioning VMs, OSs, middleware, and databases

• Application runtime configuration and memory tuning

• User management and SSO integration

•Load balancing and traffic routing

7

• Centralized log aggregation

• Scaling

• Security auditing

•Providing fault tolerance and resilience

• Service discovery

•Application placement and container creation and orchestration

• Blue/green deployments with the use of canaries

If you do not have a platform to abstract the underlying infrastructure and provide

the aforementioned capabilities, this additional burden of responsibility remains

yours. If you are spending significant time and effort building bespoke environments

for shipping software, refocusing investment back into your core business will pro‐

vide a huge payoff. Cloud Foundry allows enterprises to refocus effort back into the

business by removing as much of the undifferentiated heavy lifting as possible.

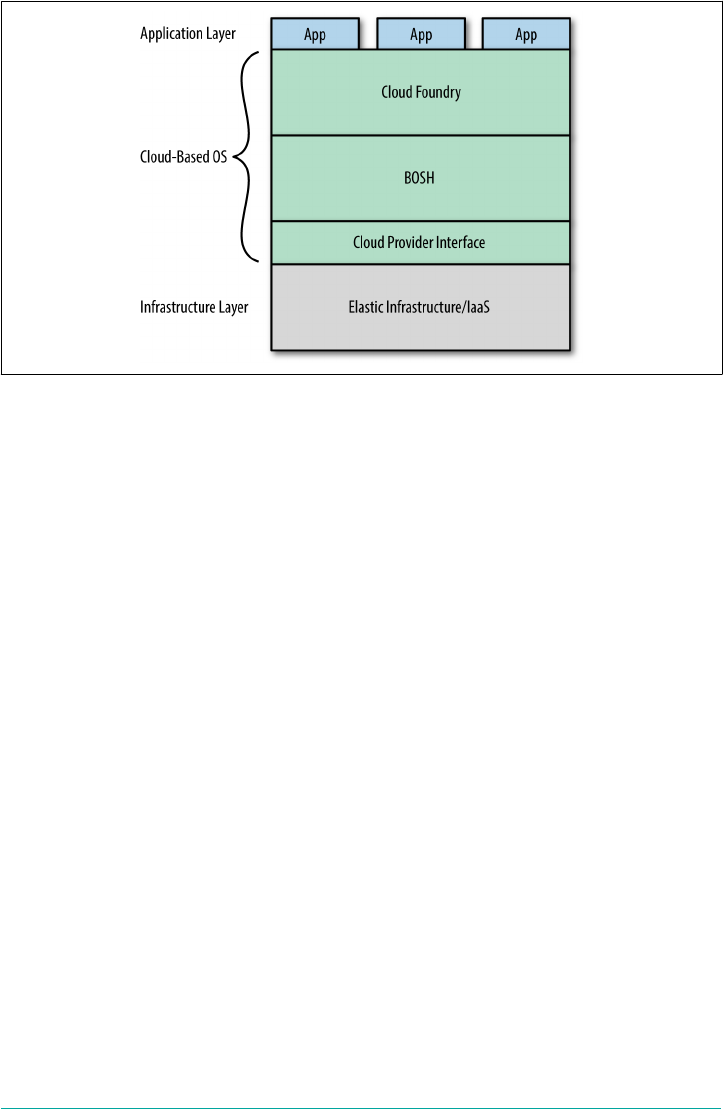

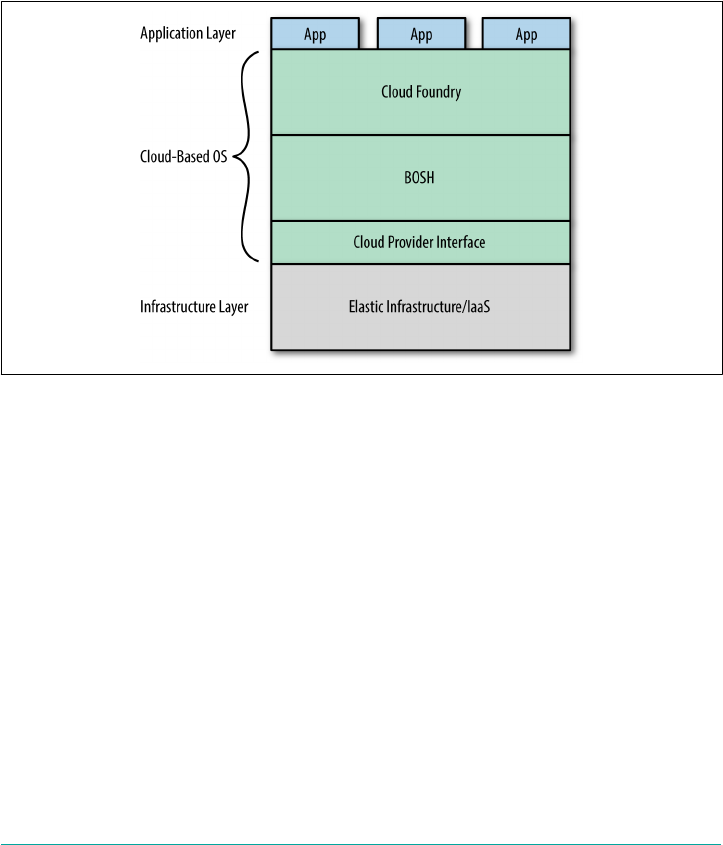

The Cloud Operating System

As an application platform, Cloud Foundry is infrastructure-agnostic, sitting on top

of your infrastructure of choice. As depicted in Figure 2-1, Cloud Foundry is effec‐

tively a cloud-based operating system that utilizes cloud-based resources, which are

hidden and abstracted away from the end user. As discussed in Chapter 1, in the

same way that the OS on your phone, tablet, or laptop abstracts the underlying physi‐

cal compute resource, Cloud Foundry abstracts the infrastructure’s compute resource

(specifically virtual storage, networking, RAM, and CPU). The net effect is that Cloud

Foundry serves both as a standard and efficient way to deploy applications and serv‐

ices across different cloud-computing environments. Conversely, if you are stuck

with directly using IaaS–specific APIs, it requires knowledge of the developer pat‐

terns and operations specific to the underlying IaaS technology, frequently resulting

in applications becoming tightly coupled to the underlying infrastructure.

8 | Chapter 2: Concepts

Figure 2-1. Cloud Foundry layers forming a cloud-based OS

Do More

Historically the long pole of application delivery, the part on the critical path that

blocks progress, has been the IT department. This results in a concept I call server

hugging, whereby developers hold on to (and hug) a plethora of VMs just in case they

need them again someday.

Nowadays, businesses no longer need to be constrained by lengthy IT processes or

organizational silos. Cloud Foundry provides a contractual promise to allow busi‐

nesses to move with velocity and establish a developer–feedback loop so that they can

tightly align products to user expectations. With Cloud Foundry, product managers

get their business back and IT engineers can focus on more interesting issues and get

to eat dinner at home.

Platforms are concerned not only with providing environments and middleware for

running applications. For example, Cloud Foundry takes on the responsibility of

keeping applications up and running in the face of failures within the system. It also

provides security, user administration, workload scheduling, and monitoring capabil‐

ities. Onsi Fakhouri, Pivotal’s Vice President of Research and Development, famously

tweeted this haiku:

Here is my source code,

run it on the cloud for me.

I do not care how!

Onsi’s quote captures the essence of Cloud Foundry’s do more capability. Cloud

Foundry is about doing more on behalf of both the developer and operator so that

they can focus on what really differentiates the business. This characteristic is seen all

Do More | 9

throughout the Cloud Foundry ecosystem. You can take a similar approach with

BOSH, Cloud Foundry’s release-engineering system, and state, “Here are my servers,

make them a Cloud Foundry. I do not care how!”

The Application as the Unit of Deployment

Traditionally, deploying application code required provisioning and deploying VMs,

OSs, and middleware to create a development environment for the application to run

in. After that environment was provisioned, it required patching and ongoing main‐

tenance. New environments were then created as the application moved through the

deployment pipeline.

Early incarnations of platforms centered on middleware: defining complex topology

diagrams of application servers, databases, and messaging engines into which you

could drop your application. When this topology diagram (or blueprint) was defined,

you then specified some additional configuration such as IP addresses and ports to

bring the defined topology and applications into existence. Although this was a step

in the right direction, from a developer’s perspective there was still a layer of com‐

plexity that you needed to configure for each deployment.

Cloud Foundry differs from traditional provisioning and orchestration engines in a

fundamental way: it uses middleware and infrastructure directly, allowing stream‐

lined development through self-service environments. Developers can build, deploy,

run, and scale applications on Cloud Foundry without having to be mindful of the

specific underlying infrastructure, middleware, and container implementation.

Cloud Foundry allows the unit of deployment, i.e., what you deploy to run your appli‐

cation, to be isolated to just the application itself. Even though there are some bene‐

fits to encapsulating both your application and dependencies as a precomposed

container image, I still believe it is more secure and more efficient to keep just the

application as the unit of deployment and allow the platform to handle the remaining

concerns. The trade-offs between both approaches are discussed further in Chapter 9;

however, the benefit of Cloud Foundry is that it supports both approaches. Build‐

packs are discussed at length in that chapter, but for now, it is enough to know that

buildpacks provide the framework and runtime support for your applications. A spe‐

cific buildpack is used to package your application with all of its dependencies. The

resulting staged application is referred to as a droplet.

On-boarding developers is easy; they can deploy applications to Cloud Foundry

using existing tool chains with little to no code modification. It enables the developer

to remove the cost and complexity of configuring infrastructure for their applica‐

tions. Using a self-service model, developers can deploy and scale applications

without being directly locked into the IaaS layer.

10 | Chapter 2: Concepts

Because developers no longer need to concern themselves with, for example, which

application container to use, which version of Java, and which memory settings or

garbage-collection (GC) policy to employ, they can simply push their applications to

Cloud Foundry, and the applications run. This allows developers to focus on deliver‐

ing applications that offer business value. Applications can then be bound to a wide

set of backing services that are available on demand.

Units of Deployment

The phrase “the application is the unit of deployment” is used lib‐

erally. Applications as the sole unit of currency has changed with

the emergence of Diego, Cloud Foundry’s new runtime. Cloud

Foundry now supports both applications running as long running

processes (LRPs) and discrete “run once” tasks such as Bash scripts

and Cron-like jobs. Diego LRPs are also referred to as application

instances, or AIs. What you deploy, be it an actual app or just a

script, is not important. The key takeaway is the removal of the

need for deploying additional layers of technology.

Using cf push Command to Deploy

Cloud Foundry provides several ways for a user to interact with it, but the principal

avenue is through its CLI. The most renowned CLI command often referenced by the

Cloud Foundry community is $ cf push.

You use the cf push command to deploy your application. It has demonstrably

improved the deployment experience. From the time you run cf push to the point

when the application is available, Cloud Foundry performs the following tasks:

• Uploads and stores application files

• Examines and stores application metadata

• Stages the application by using a buildpack to create a droplet

•Selects an appropriate execution environment in which to run the droplet

• Starts the AI and streams logs to the Loggregator

This workflow is explored in more depth in Chapter 6.

Staging

Although it is part of the cf push workflow, staging is a core Cloud Foundry con‐

cept. Cloud Foundry allows users to deploy a prebuilt Docker image or an application

artifact (source code or binaries) that has not yet been containerized. When deploy‐

ing an application artifact, Cloud Foundry will stage the application on a machine or

Using cf push Command to Deploy | 11

VM known as a Cell, using everything required to compile and run the apps locally,

including the following:

• The OS stack on which the application runs

•A buildpack containing all languages, libraries, dependencies, and runtime serv‐

ices the app uses

The staging process results in a droplet that the Cell can unpack, compile, and run.

You can then run the resulting droplet (as in the case of a Docker image) repeatedly

over several Cells. The same droplet runs the same app instances over multiple Cells

without incurring the cost of staging every time a new instance is run. This ability

provides deployment speed and confidence that all running instances from the same

droplet are identical.

Self-Service Application Life Cycle

In most traditional scenarios, the application developer and application operator typ‐

ically perform the following:

•Develop an application

• Deploy application services

• Deploy an application and connect (bind) it to application services

• Scale an application, both up and down

•Monitor an application

• Upgrade an application

This application life cycle is in play until the application is decommissioned and

taken offline. Cloud Foundry simplifies the application life cycle by offering self-

service capabilities to the end user. Adopting a self-service approach removes hand-

offs and potentially lengthy delays between teams. For example, the ability to deploy

an application, provision and bind applications to services, scale, monitor, and

upgrade are all offered by a simple call to the platform.

With Cloud Foundry, as mentioned earlier, the application or task itself becomes the

single unit of deployment. Developers just push their applications to Cloud Foundry,

and those applications run. If developers require multiple instances of an application

to be running they can use cf scale to scale the application to N number of AIs.

Cloud Foundry removes the cost and complexity of configuring infrastructure and

middleware per application. Using a self-service model, users can do the following:

• Deploy applications

12 | Chapter 2: Concepts

•Provision and bind additional services, such as messaging engines, caching solu‐

tions, and databases

• Scale applications

•Monitor application health and performance

• Update applications

•Delete applications

Deploying and scaling applications are completely independent operations. This pro‐

vides the flexibility to scale at will, without the cost of having to redeploy the applica‐

tion every time. Users can simply scale an application with a self-service call to the

platform. Through commercial products such as Pivotal Cloud Foundry, you can set

up autoscaling policies for dynamic scaling of applications when they meet certain

configurable thresholds.

Removing the infrastructure, OS, and middleware configuration concerns from

developers allows them to focus all their effort on the application instead of deploy‐

ing and configuring supporting technologies. This keeps the development focus

where it needs to be, on the business logic that generates revenue.

The Twelve-Factor Contract

An architectural style known as cloud-native applications has been established to

describe the design of applications specifically written to run in a cloud environment.

These applications avoid some of the antipatterns that were established in the client-

server era, such as writing data to the local filesystem. Those antipatterns do not work

as well in a cloud environment because, for example, local storage is ephemeral given

that VMs can move between different hosts. The Twelve-Factor App explains the 12

principles underpinning cloud-native applications.

Platforms offer a set of contracts to the applications and services that run on them.

These contracts ensure that applications are constrained to do the right thing. Twelve

Factor can be thought of as the contract between an application and a cloud-native

platform.

There are benefits to adhering to a contract that constrains things correctly. Twitter is

a great example of a constrained platform. You can write only 140 characters, but

that constraint becomes an extremely valuable feature of the platform. You can do a

lot with 140 characters coupled with the rich features surrounding that contract. Sim‐

ilarly, platform contracts are born out of previously tried-and-tested constraints; they

are enabling and make doing the right thing—good developer practices—easy for

developers.

The Twelve-Factor Contract | 13

1The terms VM and machine are used interchangeably because BOSH can deploy to multiple infrastructure

environments ranging from containers to VMs, right down to configuring physical servers.

Release Engineering through BOSH

In addition to developer concerns, the platform provides responsive IT operations,

with full visibility and control over the application life cycle, provisioning, deploy‐

ment, upgrades, and security patches. Several other operational benefits exist, such as

built-in resilience, security, centralized user management, and better insights through

capabilities like aggregated metrics and logging.

Rather than integrating, operating, and maintaining numerous individual compo‐

nents, the platform operator deals only with the platform. Structured platforms han‐

dle all the aforementioned undifferentiated heavy lifting tasks.

The Cloud Foundry repository is structured for use with BOSH. BOSH is an open

source tool chain for release-engineering, deployment, and life cycle management.

Using a YAML (YAML Ain’t Markup Language) deployment manifest, BOSH creates

and deploys (virtual) machines1 on top of the targeted computing infrastructure and

then deploys and runs software (in our case Cloud Foundry and supporting services)

on to those created machines. Many of the benefits to operators are provided through

using BOSH to deploy and manage Cloud Foundry. BOSH is often overlooked as just

another component of Cloud Foundry, but it is the bedrock of Cloud Foundry and a

vital piece of the ecosystem. It performs monitoring, failure recovery, and software

updates with zero-to-minimal downtime. Chapter 10 discusses BOSH at length.

Rather than utilizing a bespoke integration of a variety of tools and techniques that

provide solutions to individual parts of the release-engineering goal, BOSH is

designed to be a single tool covering the entire set of requirements of release engi‐

neering. BOSH enables software deployments to be:

• Automated

• Reproducible

•Scalable

• Monitored with self-healing failure recovery

• Updatable with zero-to-minimal downtime

BOSH translates intent into action via repeatability by always ensuring every provi‐

sioned release is identical and repeatable. This removes the challenge of configuration

drift and removes the sprawl of snowflake servers.

BOSH configures infrastructure through code. By design, BOSH tries to abstract

away the differences between infrastructure platforms (IaaS or physical servers) into

14 | Chapter 2: Concepts

a generalized, cross-platform description of your deployment. This provides the ben‐

efit of being infrastructure agnostic (as far as possible).

BOSH performs monitoring, failure recovery, software updates, and patching with

zero-to-minimal downtime. Without such a release-engineering tool chain, all these

concerns remain the responsibility of the operations team. A lack of automation

exposes the developer to unnecessary risk.

Built-In Resilience and Fault Tolerance

A key feature of Cloud Foundry is its built-in resilience. Cloud Foundry provides

built-in resilience and self-healing based on control theory. Control theory is a branch

of engineering and mathematics that uses feedback loops to control and modify the

behavior of a dynamic system. Resiliency is about ensuring that the actual system

state (the number of running applications, for example) matches the desired state at

all times, even in the event of failures. Resiliency is an essential but often costly com‐

ponent of business continuity.

Cloud Foundry automates the recovery of failed applications, components, and pro‐

cesses. This self-healing removes the recovery burden from the operator, ensuring

speed of recovery. Cloud Foundry, underpinned by BOSH, achieves resiliency and

self-healing through:

•Restarting failed system processes

• Recreating missing or unresponsive VMs

• Deployment of new AIs if an application crashes or becomes unresponsive

•Application striping across availability zones (AZs) to enforce separation of the

underlying infrastructure

•Dynamic routing and load balancing

Cloud Foundry deals with application orchestration and placement focused on even

distribution across the infrastructure. The user should not need to worry about how

the underlying infrastructure runs the application beyond having equal distribution

across different resources (known as availability zones). The fact that multiple copies

of the application are running with built-in resiliency is what matters.

Cloud Foundry provides dynamic load balancing. Application consumers use a route

to access an application; each route is directly bound to one or more applications in

Cloud Foundry. When running multiple instances, it balances the load across the

instances, dynamically updating its routing table. Dead application routes are auto‐

matically pruned from the routing table, with new routes added when they become

available.

Built-In Resilience and Fault Tolerance | 15

Without these capabilities, the operations team is required to continually monitor

and respond to pager alerts from failed apps and invalid routes. By replacing manual

interaction with automated, self-healing software, applications and system compo‐

nents are restored quickly with less risk and downtime. The resiliency concern is sat‐

isfied once, for all applications running on the platform, as opposed to developing

customized monitoring and restart scripts per application. The platform removes the

ongoing cost and associated maintenance of bespoke resiliency solutions.

Self-Healing Processes

Traditional infrastructure as code tools do not check whether provisioned services are

up and running. BOSH has strong opinions on how to create your release, forcing

you to create a monitor script for the process. If a BOSH-deployed component has a

process that dies, the monitor script will try to restart it.

Self-Healing VMs

BOSH has a Health Monitor and Resurrector. The Health Monitor uses status and

life cycle events to monitor the health of VMs. If the Health Monitor detects a prob‐

lem with a VM, it can trigger an alert and invoke the Resurrector. The Resurrector

automatically recreates VMs identified by the Health Monitor as missing or unre‐

sponsive.

Self-Healing Application Instance Count

Cloud Foundry runs the application transparently, taking care of the application life

cycle. If an AI dies for any reason (e.g., because of a bug in the application) or a VM

dies, Cloud Foundry can self-heal by restarting new instances so as to keep the

desired capacity to run AIs. It achieves this by monitoring how many instances of

each application are running. The Cell manages its AIs, tracks started instances, and

broadcasts state messages. When Cloud Foundry detects a discrepancy between the

actual number of running instances versus the desired number of available AIs, it

takes corrective action and initiates the deployment of new AIs. To ensure resiliency

and fault tolerance, you should run multiple AIs for a single application. The AIs will

be distributed across multiple Cells for resiliency.

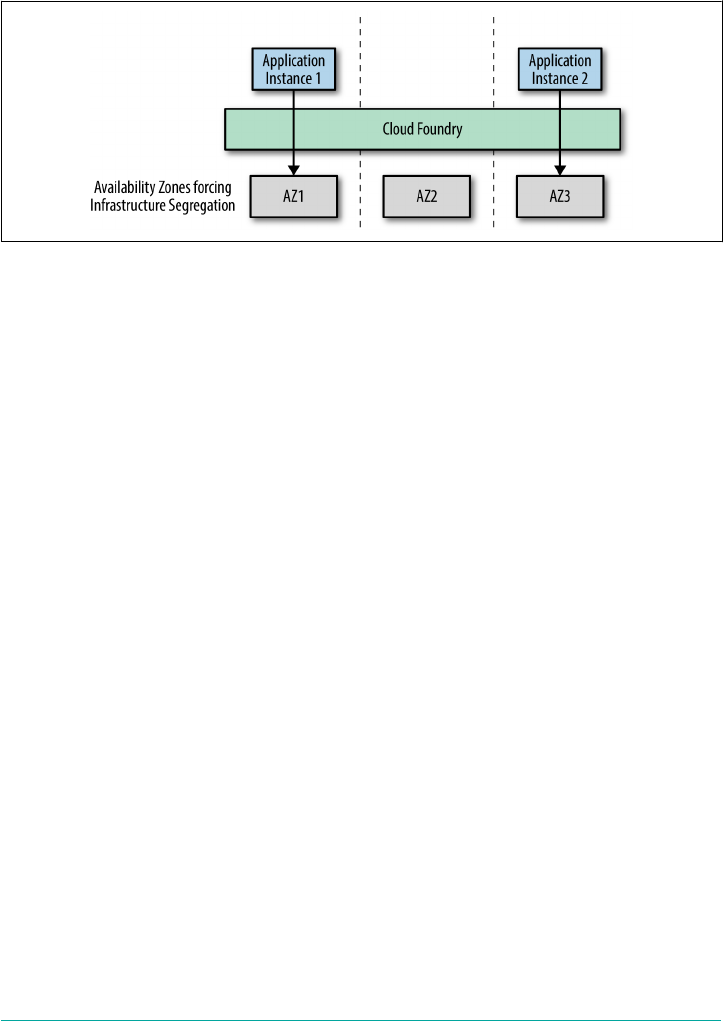

Resiliency Through Availability Zones

Finally, Cloud Foundry supports the use of availability zones (AZs). As depicted in

Figure 2-2, you can use AZs to enforce separation of the underlying infrastructure.

For example, when running on AWS, you can directly map Cloud Foundry AZs to

different AWS AZs. When running on vCenter, you can map Cloud Foundry AZs to

different vCenter Cluster and resource-pool combinations. Cloud Foundry can then

deploy its components across the AZs. When you deploy multiple AIs, Cloud Foun‐

16 | Chapter 2: Concepts

dry will distribute them evenly across the AZs. If, for example, a rack of servers fails

and brings down an entire AZ, the AIs will still be up and serving traffic in the

remaining AZs.

Figure 2-2. Application resiliency through Cloud Foundry AZs

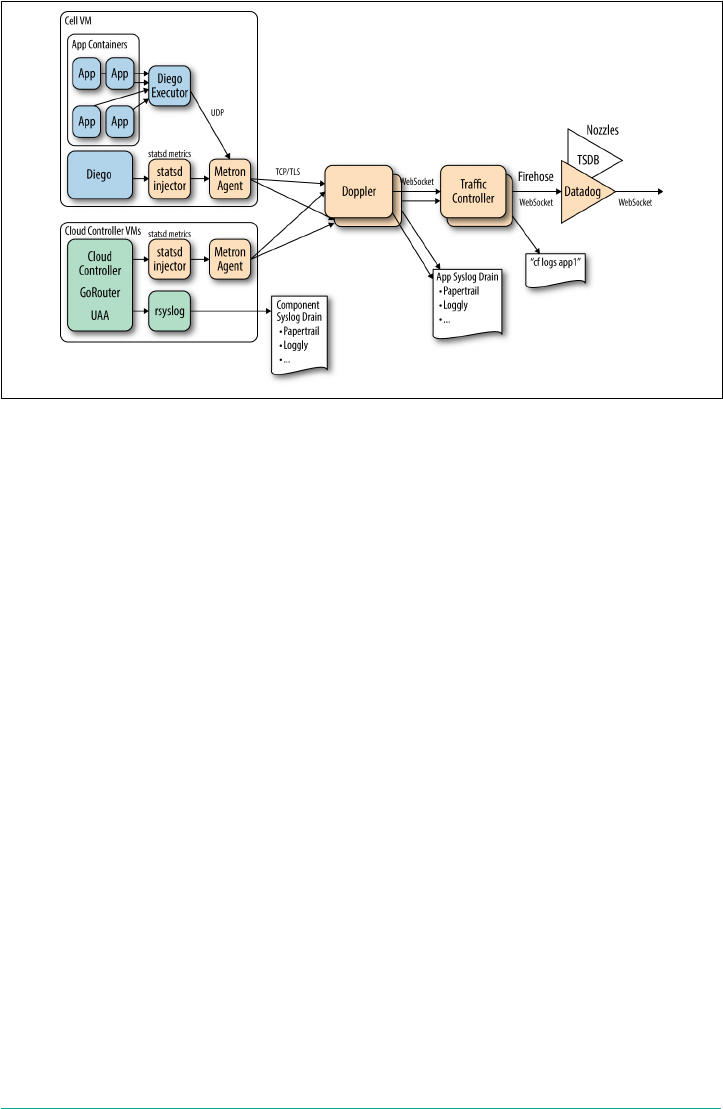

Aggregated Streaming of Logs and Metrics

Cloud Foundry provides insight into both the application and the underlying plat‐

form through aggregated logging and metrics. The logging system within Cloud

Foundry is known as the Loggregator. It is the inner voice of the system, telling the

operator and developer what is happening. It is used to manage the performance,

health, and scale of running applications and the platform itself, via the following:

•Logs provide visibility into behavior; for example, application logs can be used to

trace through a specific call stack.

•Metrics provide visibility into health; for example, container metrics can include

memory, CPU, and disk-per-app instance.

Insights are obtained through storing and analyzing a continuous stream of aggrega‐

ted, time-ordered events from the output streams of all running processes and back‐

ing services. Application logs are aggregated and streamed to an endpoint via Cloud

Foundry’s Loggregator Firehose. Logs from the Cloud Foundry system components

can also be made available and processed through a separate syslog drain. Cloud

Foundry produces both the application and system logs to provide a holistic view to

the end user.

Figure 2-3 illustrates how application logs and syslogs are separated as streams, in

part to provide isolation and security between the two independent concerns, and in

part due to consumer preferences. Generally speaking, app developers do not want to

wade through component logs to resolve an app-specific issue. Developers can trace

the log flow from the frontend router to the application code from a single log file.

Aggregated Streaming of Logs and Metrics | 17

Figure 2-3. The Loggregator system architecture used for aggregating application logs

and metrics

In addition to logs, metrics are gathered and streamed from system components.

Operators can use metrics information to monitor an instance of Cloud Foundry.

Furthermore, Cloud Foundry events show specific events such as when an application

is started or stopped. The benefits of aggregated log, metric, and event streaming

include the following:

• You can stream logs to a single endpoint.

•Streamed logs provide timestamped outputs per application.

•Both application logs and system-component logs are aggregated, simplifying

their consumption.

•Metrics are gathered and streamed from system components.

•Operators can use metrics information to monitor an instance of Cloud Foun‐

dry.

•You can view logs from the command line or drain them into a log management

service such as an ELK stack (Elasticsearch, Logstash, and Kibana), Splunk, or

PCF Metrics.

•Viewing events is useful when debugging problems. For example, it is useful to

be able to correlate an app instance event (like an app crash) to the container’s

specific metrics (high memory prior to crash).

The cost of implementing an aggregated log and metrics-streaming solution involves

bespoke engineering to orchestrate and aggregate the streaming of both syslog and

18 | Chapter 2: Concepts

application logs from every component within a distributed system into a central

server. Using a platform removes the ongoing cost and associated maintenance of

bespoke logging solutions.

Security

For enterprises working with cloud-based infrastructure, security is a top concern.

Usually the security teams have the strongest initial objections to Cloud Foundry

because it works in a way that is generally unprecedented to established enterprise

security teams. However, as soon as these teams understand the strength of Cloud

Foundry’s security posture, my experience is that they become one of your strongest

champions.

Distributed System Security

Cloud Foundry offers significant security benefits over traditional approaches to

deploying applications because it allows you to strengthen your security posture

once, for all applications deployed to the platform. However, securing distributed

systems involves inherent complexity. For example, think about these issues:

•How much effort is required to automatically establish and apply network traffic

rules to isolate components?

•What policies should be applied to automatically limit resources in order to

defend against denial-of-service (DoS) attacks?

•How do you implement role-based access controls (RBAC) with in-built auditing

of system access and actions?

•How do you know which components are potentially affected by a specific vul‐

nerability and require patching?

•How do you safely patch the underlying OS without incurring application down‐

time?

These issues are standard requirements for most systems running in corporate data

centers. The more custom engineering you use, the more you need to secure and

patch that system. Distributed systems increase the security burden because there are

more moving parts, and with the advances in container technology, new challenges

arise, such as “How do you dynamically apply microsegmentation at the container

layer?” Additionally, when it comes to rolling out security patches to update the sys‐

tem, many distributed systems suffer from configuration drift—namely, the lack of

consistency between supposedly identical environments. Therefore, when working

with complex distributed systems (specifically any cloud-based infrastructure), envi‐

ronmental risk factors are intensified.

Security | 19

The Challenge of Configuration Drift

Deployment environments (such as staging, quality assurance, and

production) are often complex and time-consuming to construct

and administer, producing the ongoing challenge of trying to man‐

age configuration drift to maintain consistency between environ‐

ments and VMs. Reproducible consistency through release-

engineering tool chains such as Cloud Foundry’s BOSH addresses

this challenge.

Environmental Risk Factors for Advanced Persistent Threats

Malware known as advanced persistent threats (APTs) needs three risk factors in

order to thrive:

1. Time

2. Leaked or misused credentials

3. Misconfigured and/or unpatched software

Given enough time, APTs can observe, analyze, and learn what is occurring within

your system, storing away key pieces of information at will. If APTs obtain creden‐

tials, they can then further access other systems and data such as important ingress

points into your protected data layer. Finally, unpatched software vulnerabilities pro‐

vide APTs the freedom to further exploit, compromise, and expose your system.

Challenge of Minimal Change

There has been a belief that if enterprises deliver software with velocity, the trade-off

is they must reduce their security posture and increase risk. Therefore, traditionally,

many enterprises have relied on a concept of minimal change to mitigate risk and

reduce velocity. Security teams establish strict and restrictive policies in an attempt to

minimize the injection of new vulnerabilities. This is evident by ticketing systems to

make basic configuration changes to middleware and databases, long-lived transport

layer security (TLS) credentials, static firewall rules, and numerous security policies

to which applications must adhere.

Minimal change becomes compounded by complexity of the environment. Because

machines are difficult to patch and maintain, environmental complexity introduces a

significant lag between the time a vulnerability is discovered and the time a machine

is patched, be it months, or worse, even years in some production enterprise environ‐

ments.

20 | Chapter 2: Concepts

2The three Rs of enterprise security is a phrase coined in an article by Justin Smith, a cloud identity and secu‐

rity expert. I strongly suggest that if you’re interested in enterprise security, you read the full article titled The

Three R’s of Enterprise Security.

The Three Rs of Enterprise Security

IThese combined risk factors provide a perfect ecosystem in which APTs can flour‐

ish, and minimal change creates an environment in which all three factors are likely

to occur. Cloud Foundry inverts the traditional enterprise security model by focusing

on the three Rs of enterprise security: rotate, repave, repair.2"

1. Rotate the credentials frequently so that they are valid only for short periods of

time.

2. Repave (rebuild) servers and applications from a known good state to cut down

on the amount of time an attack can live.

3. Repair vulnerable software as soon as updates are available.

For the three Rs to be effective in minimizing the APT risk factors, you need to

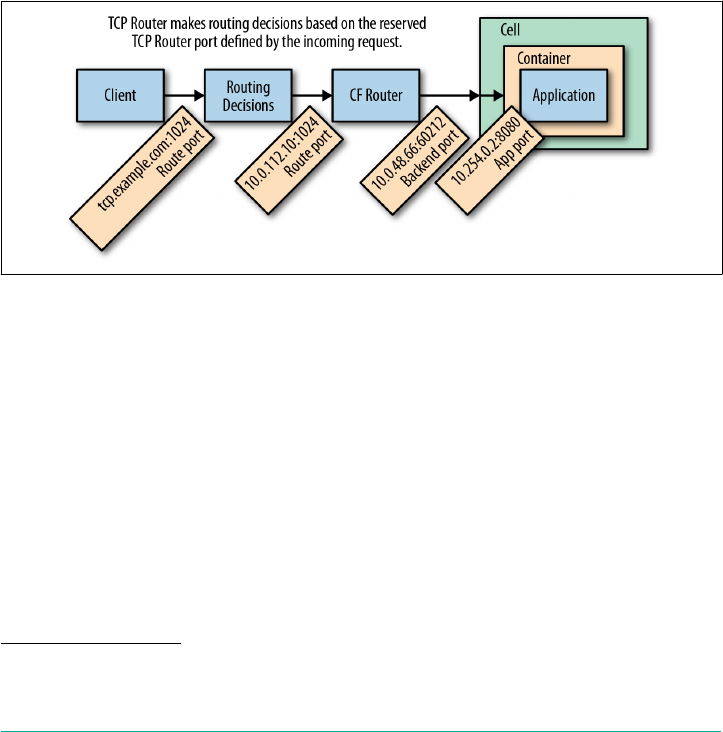

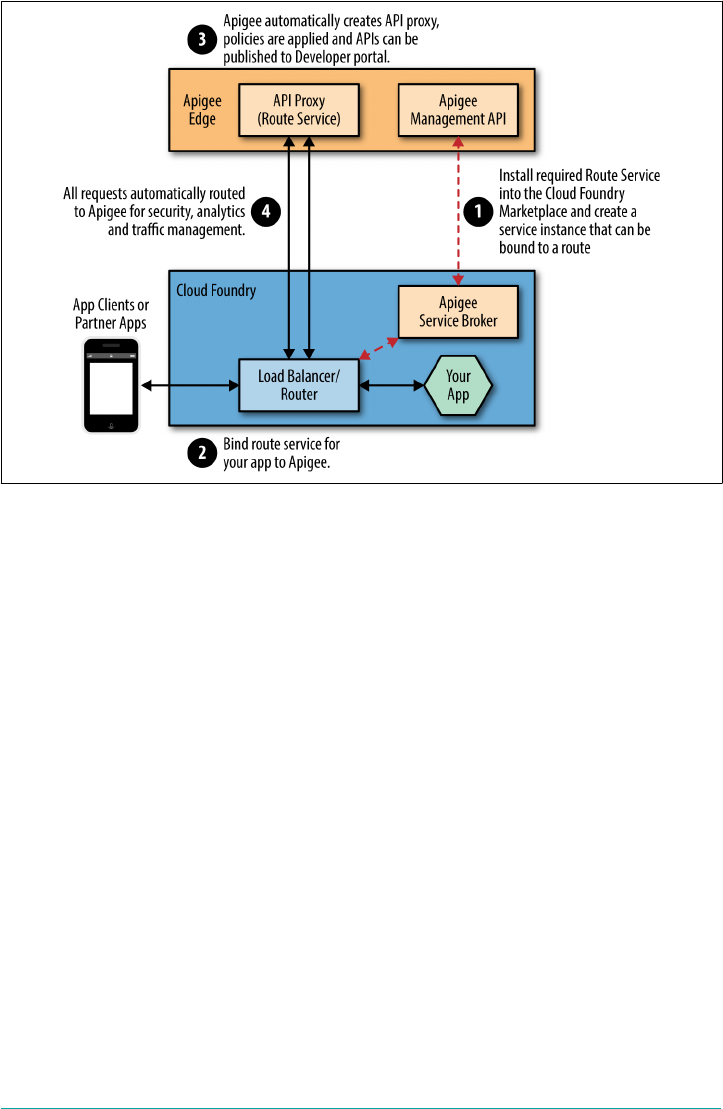

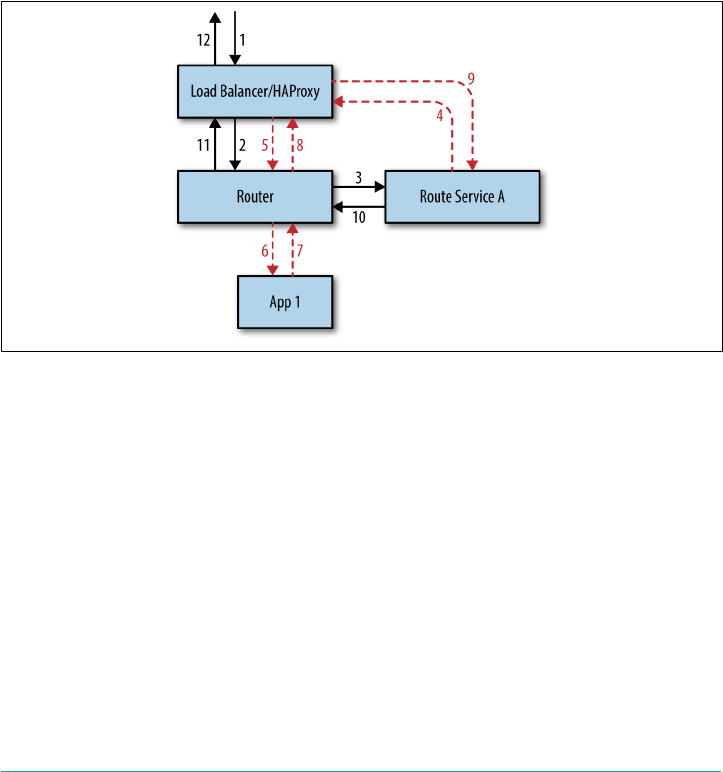

implement them repeatedly at high velocity. For example, data center credentials can