Analysis Of Epidemiological Data Using R And Epicalc Epicalc_Book Book

User Manual: Epicalc_Book

Open the PDF directly: View PDF ![]() .

.

Page Count: 328 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Chapter 1: Starting to use R

- Chapter 2: Vectors

- Chapter 3: Arrays, Matrices and Tables

- Chapter 4: Data Frames

- Chapter 5: Simple Data Exploration

- Chapter 6: Date and Time

- Chapter 7: An Outbreak Investigation: Describing Time

- Chapter 8: An Outbreak Investigation: Risk Assessment

- Chapter 9: Odds Ratios, Confounding and Interaction

- Chapter 10: Basic Data Management

- Chapter 11: Scatter Plots and Linear Regression

- Chapter 12: Stratified Linear Regression

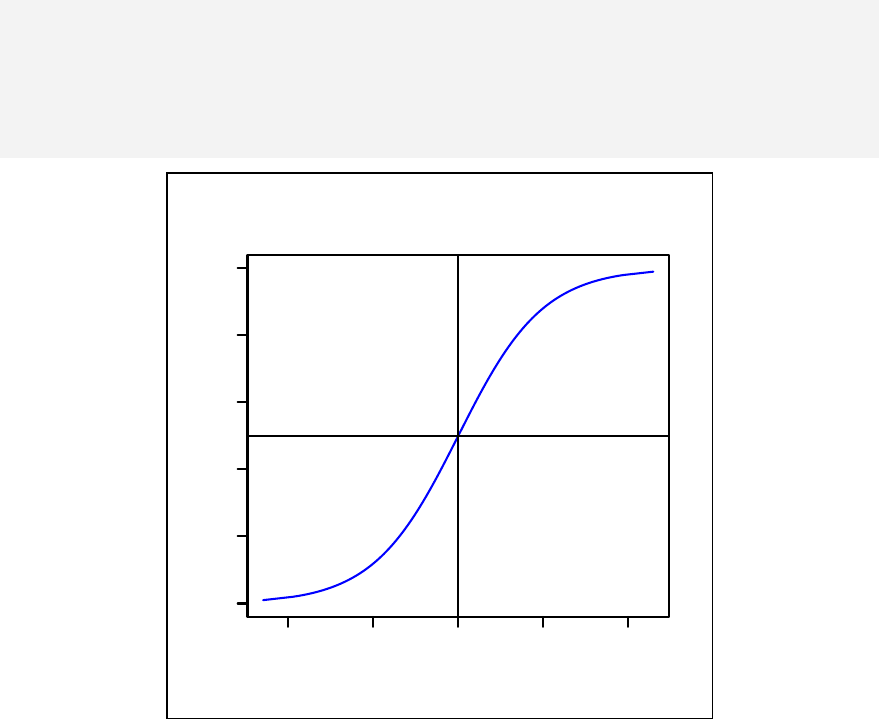

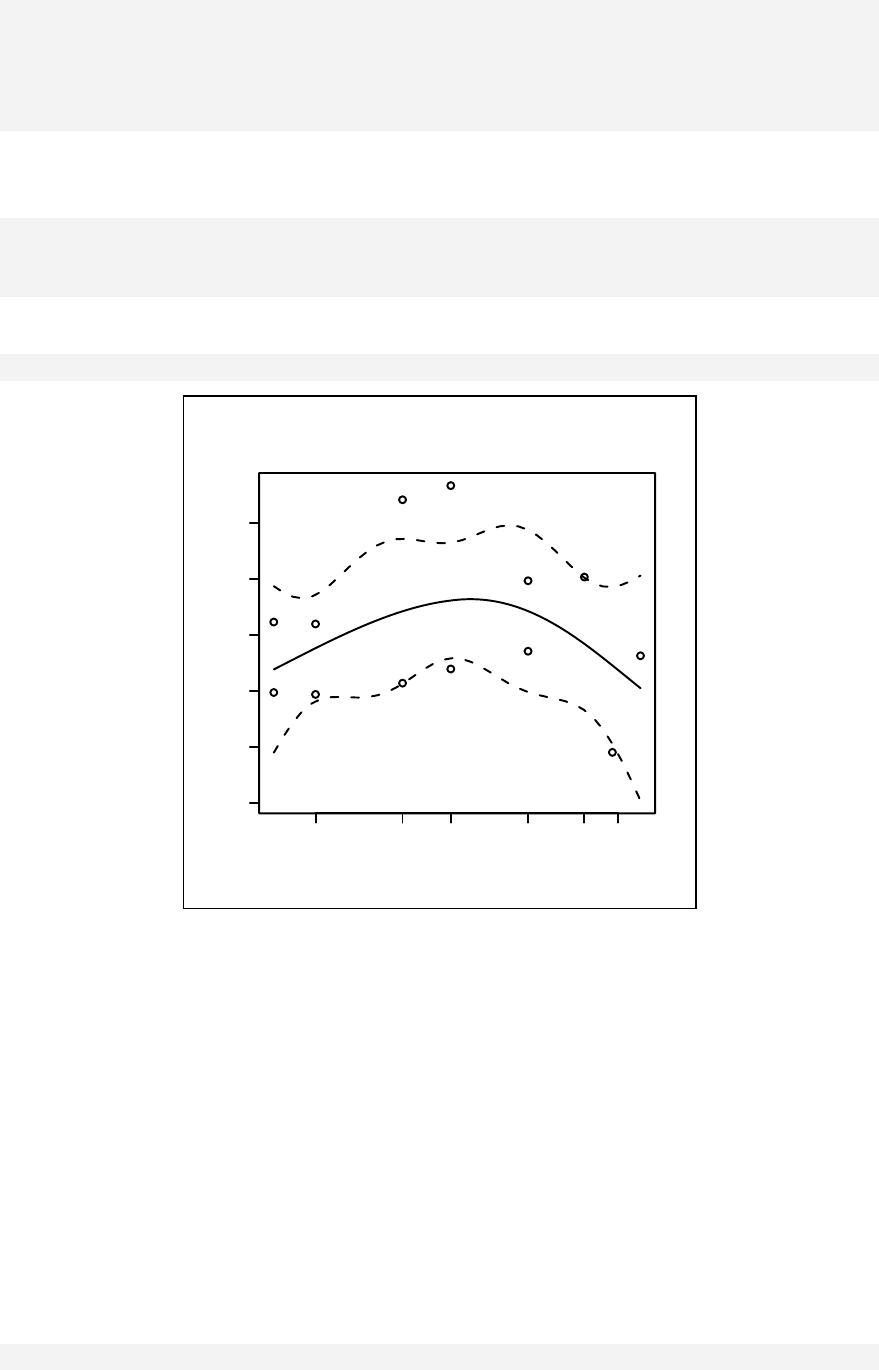

- Chapter 13: Curvilinear Relationship

- Chapter 14: Generalized Linear Models

- Chapter 15: Logistic Regression

- Chapter 16: Matched Case Control Study

- Chapter 17: Polytomous Logistic Regression

- Chapter 18: Ordinal Logistic Regression

- Chapter 19: Poisson ad Negative Binomial Regression

- Chapter 20: Introduction to Multi-level Modelling

- Chapter 21: Survival Analysis

- Chapter 22: Cox Regression

- Chapter 23: Analysing Attitudes Data

- Chapter 24: Sample Size Calculation

- Chapter 25: Documentation

- Chapter 26: Strategies for Handling Large Datasets

- Chapter 27: Table Stacking for a Manuscript

- Solutions to Exercises

- Index

- Epicalc Functions

- Epicalc Datasets

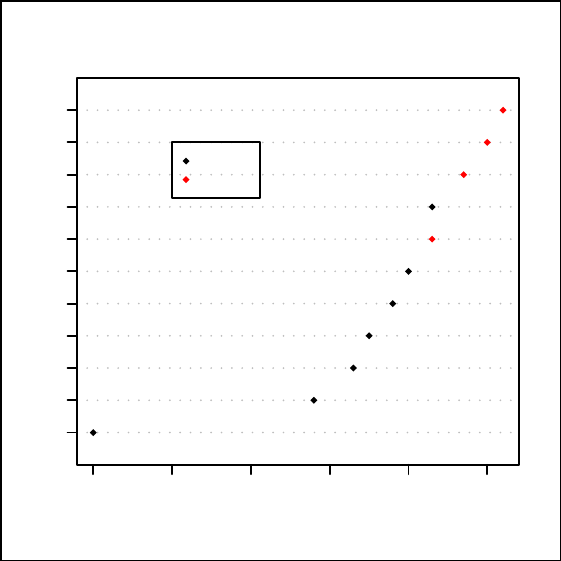

Analysis of epidemiological

data using R and Epicalc

Epidemiology Unit

Prince of Songkla University

THAILAND

> help.start()

> exp(-5)

[1] 0.006738

9

> log(3.8

+ )

[1] 1.335001

> help.start()

> exp(-5)

[1] 0.006738

9

> log(3.8

+ )

[1] 1.335001

0

10

20

30

40

50

Virasakdi Chongsuvivatwong

0

10

20

30

40

50

EPICALC–OK.indd 1 20.2.2008 15:24:54

Analysis of Epidemiological Data Using

R and Epicalc

Author: Virasakdi Chongsuvivatwong

cvirasak@medicine.psu.ac.th

Epidemiology Unit

Prince of Songkla University

THAILAND

i

Preface

Data analysis is very important in epidemiological research. The capacity of

computing facilities has been steadily increasing, moving state of the art

epidemiological studies along the same direction of computer advancement.

Currently, there are many commercial statistical software packages widely used by

epidemiologists around the world. For developed countries, the cost of software is

not a major problem. For developing countries however, the real cost is often too

high. Several researchers in developing countries thus eventually rely on a pirated

copy of the software.

Freely available software packages are limited in number and readiness of use.

EpiInfo, for example, is free and useful for data entry and simple data analysis.

Advanced data analysts however find it too limited in many aspects. For example, it

is not suitable for data manipulation for longitudinal studies. Its regression analysis

facilities cannot cope with repeated measures and multi-level modelling. The

graphing facilities are also limited.

A relatively new and freely available software called R is promising. Supported by

leading statistical experts worldwide, it has almost everything that an

epidemiological data analyst needs. However, it is difficult to learn and to use

compared with similar statistical packages for epidemiological data analysis such as

Stata. The purpose of this book is therefore to bridge this gap by making R easy to

learn for researchers from developing countries and also to promote its use.

My experience in epidemiological studies spans over twenty years with a special

fondness of teaching data analysis. Inspired by the spirit of the open-source

software philosophy, I have spent a tremendous effort exploring the potential and

use of R. For four years, I have been developing an add-on package for R that

allows new researchers to use the software with enjoyment. More than twenty

chapters of lecture notes and exercises have been prepared with datasets ready for

self-study.

Supported by WHO, TDR and the Thailand Research Fund, I have also run a

number of workshops for this software in developing countries including Thailand,

Myanmar, North Korea, Maldives and Bhutan, where R and Epicalc was very much

welcomed. With this experience, I hereby propose that the use of this software

should be encouraged among epidemiological researchers, especially for those who

cannot afford to buy expensive commercial software packages.

ii

R is an environment that can handle several datasets simultaneously. Users get

access to variables within each dataset either by copying it to the search path or by

including the dataset name as a prefix. The power of R in this aspect is a drawback

in data manipulation. When creating a variable or modifying an existing one,

without prefixing the dataset name, the new variable is isolated from its parental

dataset. If prefixing is the choice, the original data is changed but not the copy in

the search path. Careful users need to remove the copy in the search path and

recopy the new dataset into it. The procedure in this aspect is clumsy. Not being

tidy will eventually end up with too many copies in the search path overloading the

system or confusing the analyst on where the variable is actually located.

Epicalc presents a concept solution for common types of work where the data

analyst works on one dataset at a time using only a few commands. In Epicalc the

user can virtually eliminate the necessity of specifying the dataset and can avoid

overloading of the search path very effectively and efficiently. In addition to make

tidying of memory easy to accomplished, Epicalc makes it easy to recognize the

variables by adopting variable labels or descriptions which have been prepared

from other software such as SPSS or Stata or locally prepared by Epicalc itself.

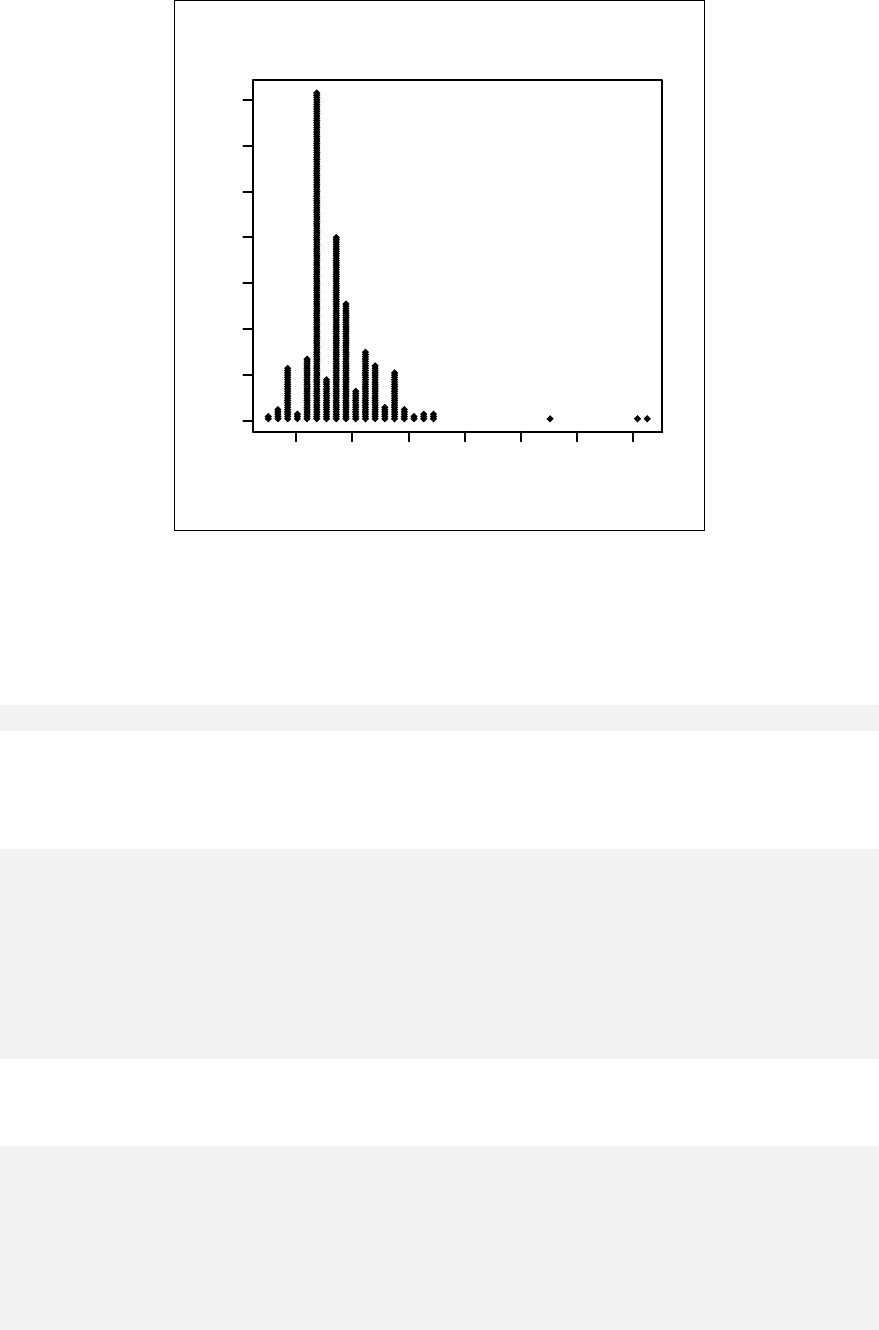

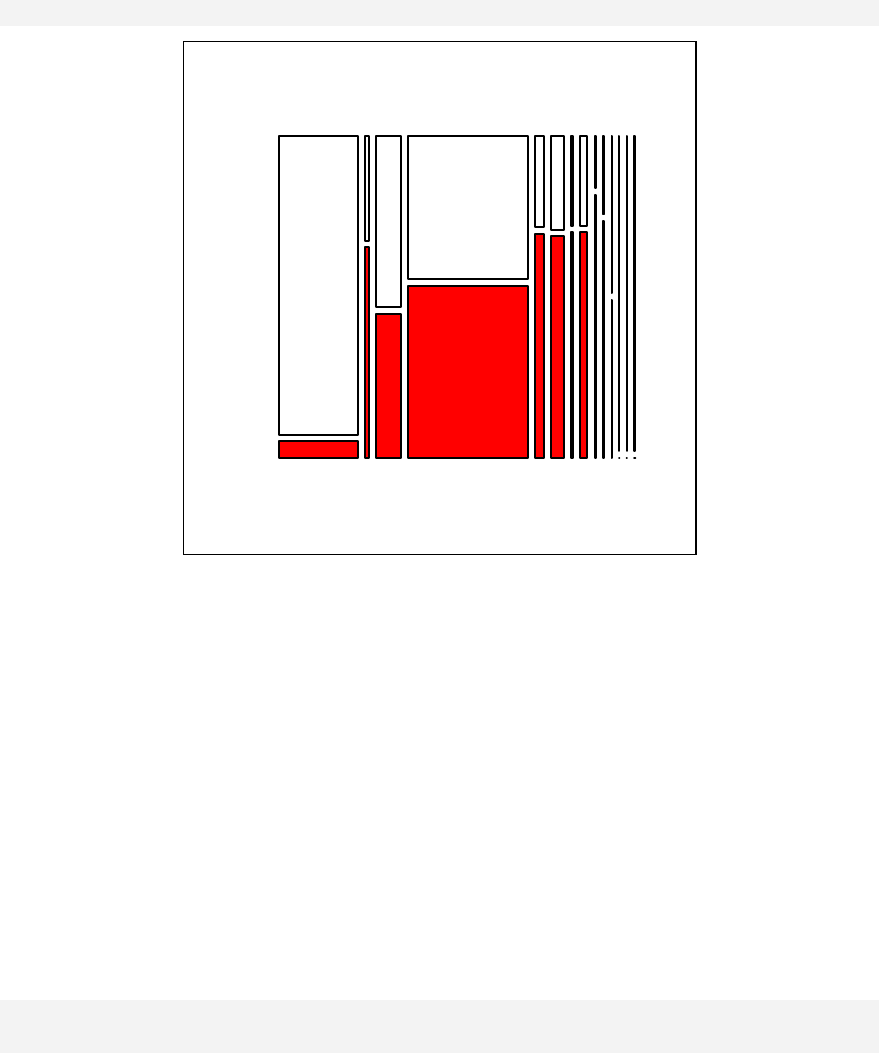

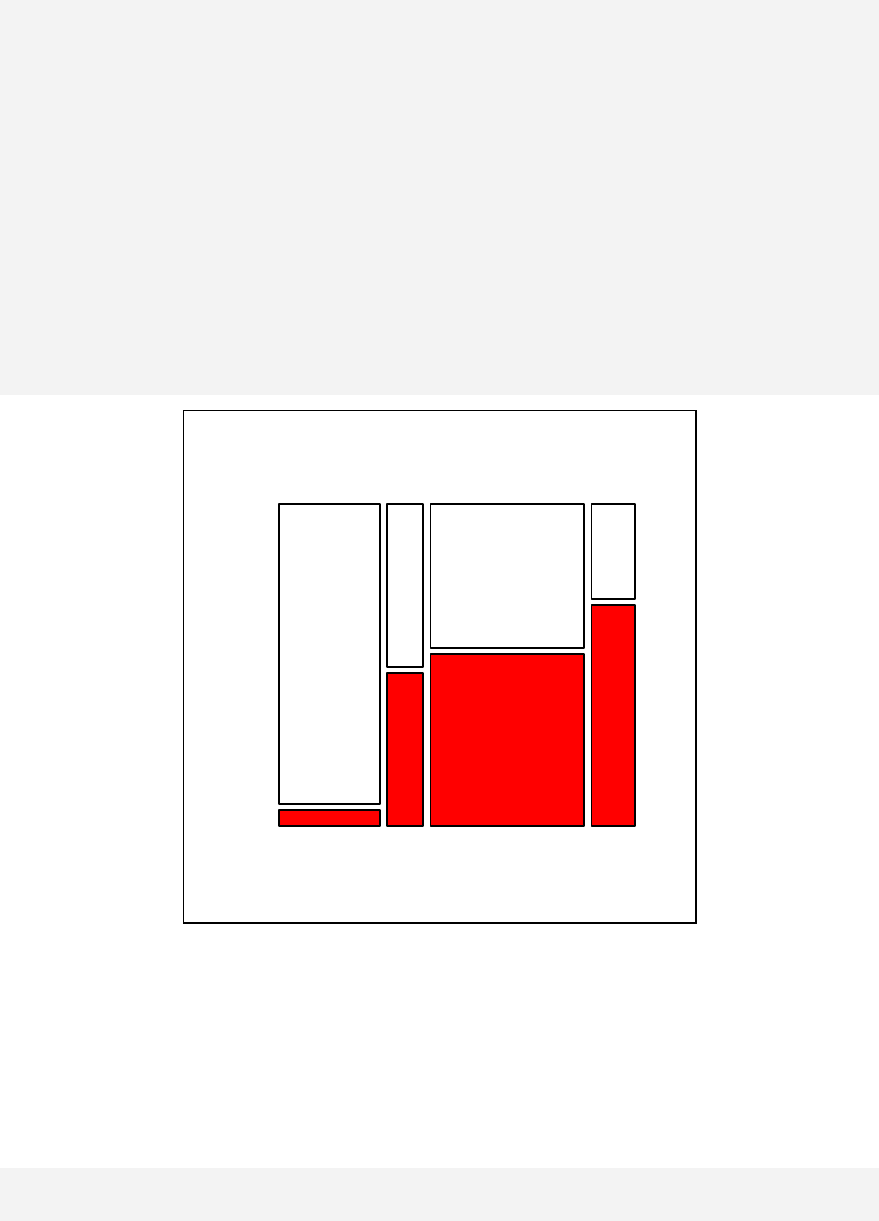

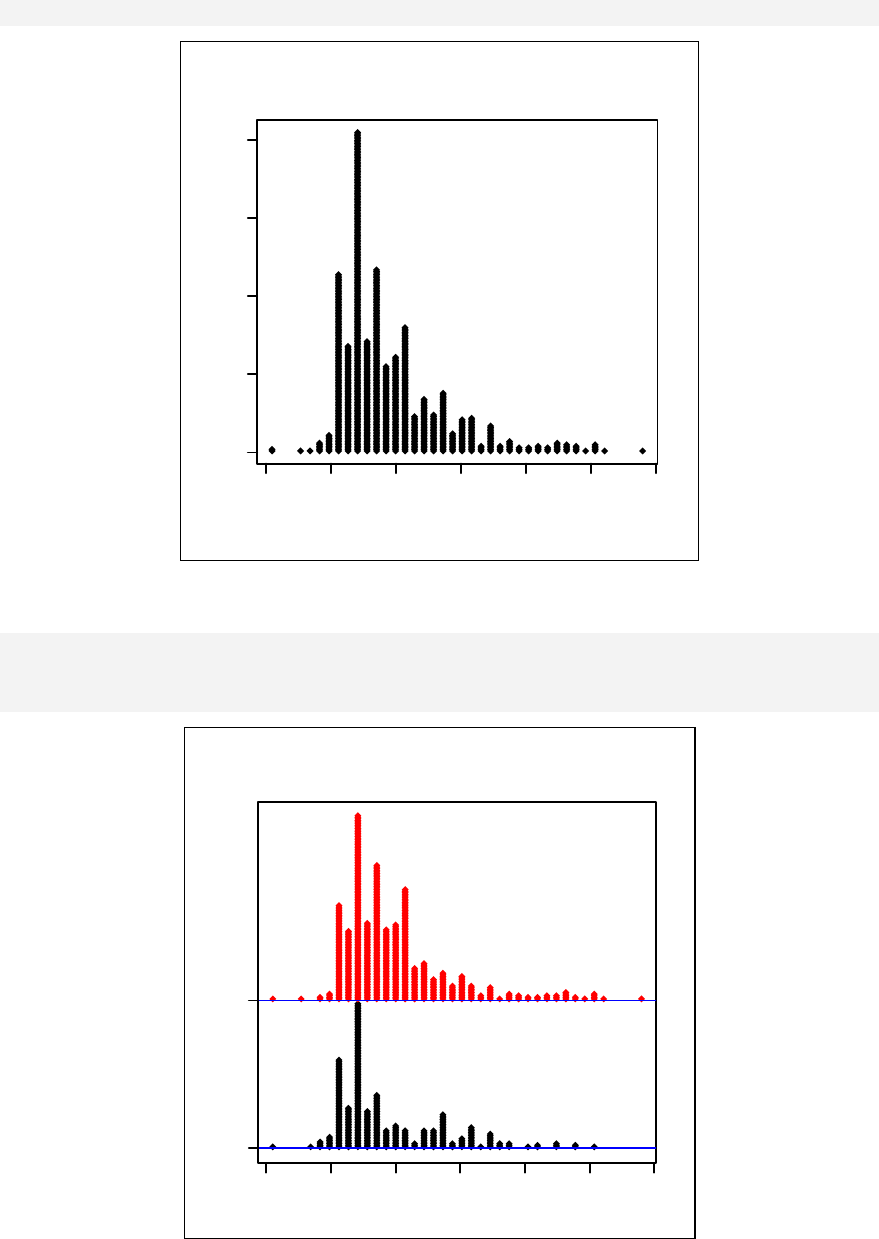

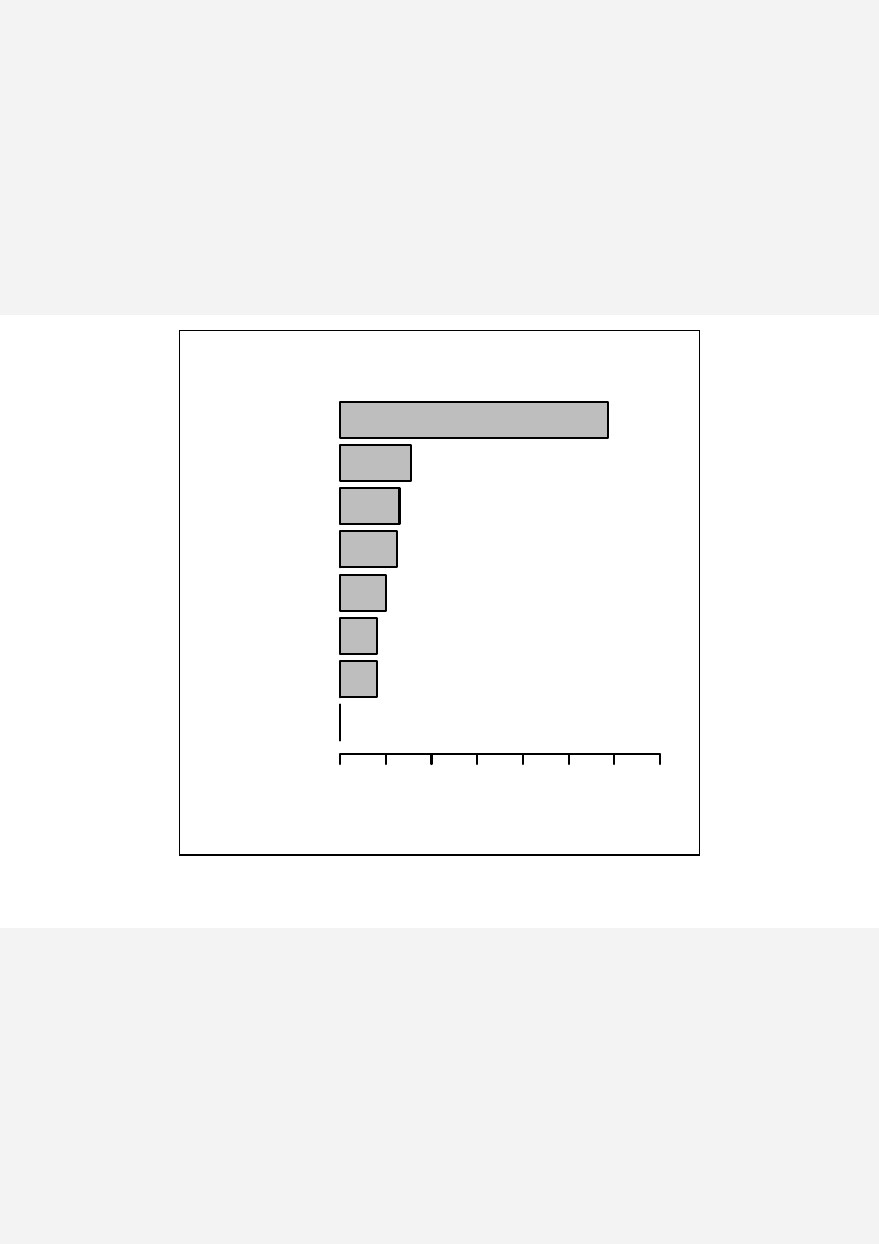

R has very powerful graphing functions that the user has to spend time learning.

Epicalc exploits this power by producing a nice plot of the distribution

automatically whenever a single variable is summarised. A breakdown of the first

variable by a second categorical variable is also simple and graphical results are

automatically displayed. This automatic graphing strategy is also applied to one-

way tabulation and two-way tabulation. Description of the variables and the value

or category labels are fully exploited with these descriptive graphs.

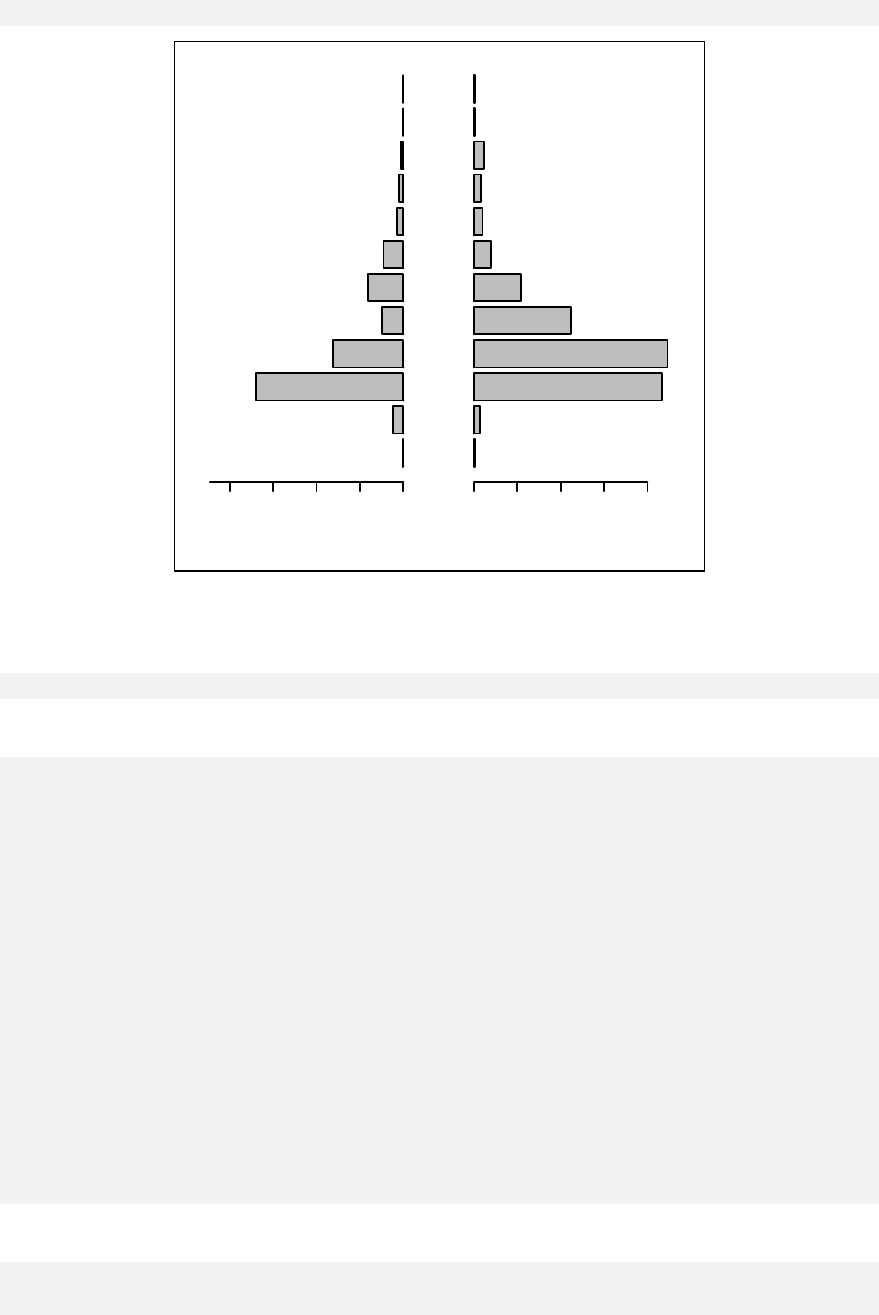

Additional epidemiological functions added in by Epicalc include calculation of

sample size, matched 1:n (n can vary) tabulation, kappa statistics, drawing of ROC

curve from a table or from a logistic regression results, population pyramid plots

from age and sex and follow-up plots.

R has several advanced regression modelling functions such as multinomial logistic

regression, ordinal logistic regression, survival analysis and multi-level modelling.

By using Epicalc nice tables of odds ratios and 95% CI are produced, ready for

simple transferal into a manuscript document with minimal further modification

required.

Although use of Epicalc implies a different way of working with R from

conventional use, installation of Epicalc has no effect on any existing or new

functions of R. Epicalc functions only increase efficiency of data analysis and

makes R easier to use.

iii

This book is essentially about learning R with an emphasis on Epicalc. Readers

should have some background in basic computer usage. With R, Epicalc and the

supplied datasets, the users should be able to go through each lesson learning the

concepts of data management, related statistical theories and the practice of data

analysis and powerful graphing.

The first four chapters introduce R concepts and simple handling of important basic

elements such as scalars, vectors, matrices, arrays and data frames. Chapter 5 deals

with simple data exploration. Date and time variables are defined and dealt with in

Chapter 6 and fully exploited in a real dataset in Chapter 7. Descriptive statistics

and one-way tabulations are automatically accompanied by corresponding graphs

making it rather unlikely that important information is overlooked. Finally, time

plots of exposure and disease onsets are plotted with a series of demonstrating

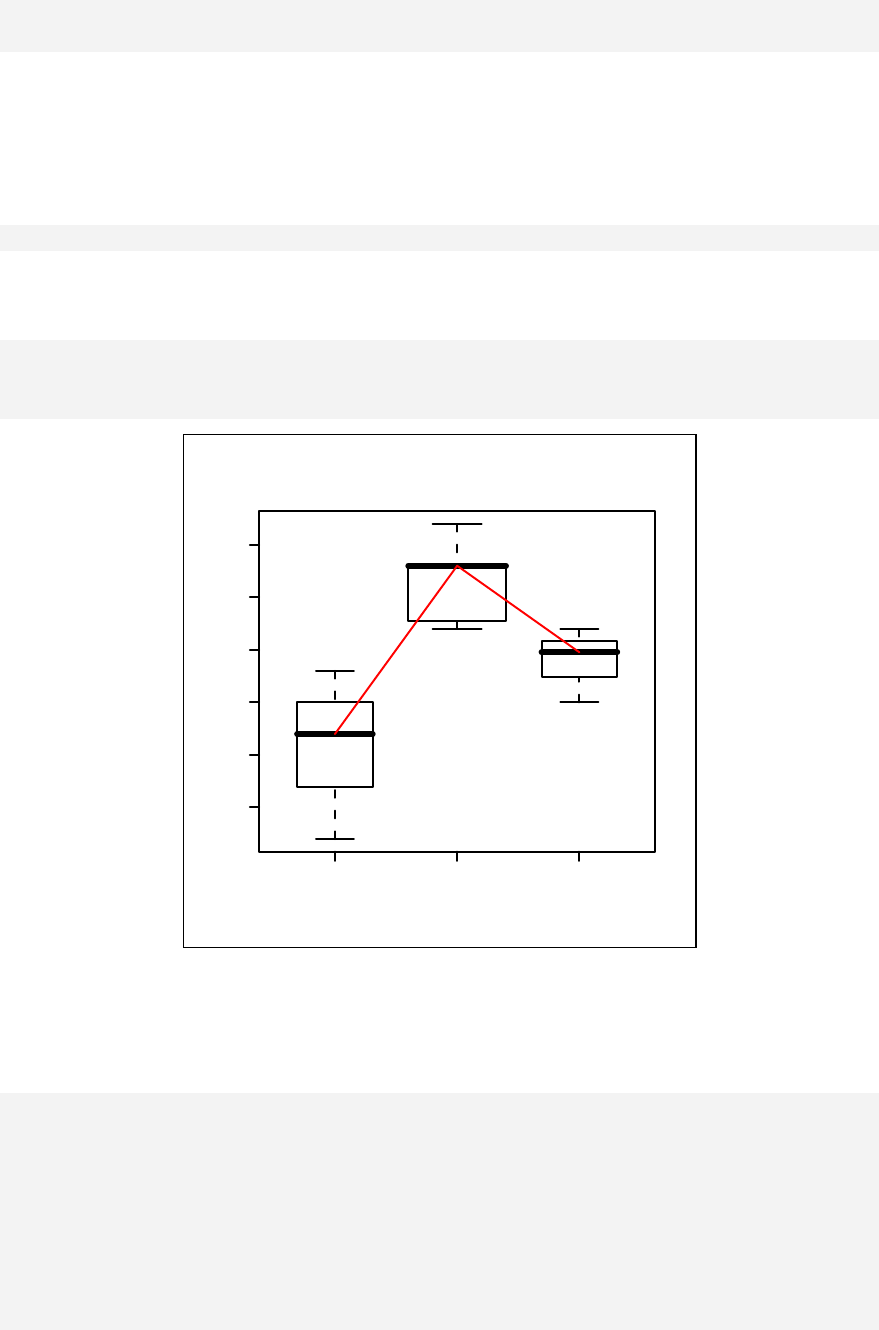

commands. Chapter 8 continues to investigate the outbreak by two-way tabulation.

Various kinds of risk assessment, such as the risk ratio and protective efficacy, are

analysed with numeric and graphic results.

Chapter 9 extends the analysis of the dataset to deal with levels of association or

odds ratios. Stratified tabulation, the Mantel-Haenzsel odds ratio, and test of

homogeneity of odds ratios are explained in detail. All results are complemented by

simultaneous plots. With these graphs, the concept of confounding is made more

understandable.

Before proceeding further, the reader has a thorough exercise of data cleaning and

standard data manipulation in Chapter 10. Simple looping commands are

introduced to increase the efficiency of data management. Subsequently, and from

time to time in the book, readers will learn how to develop these loops to create

powerful graphs.

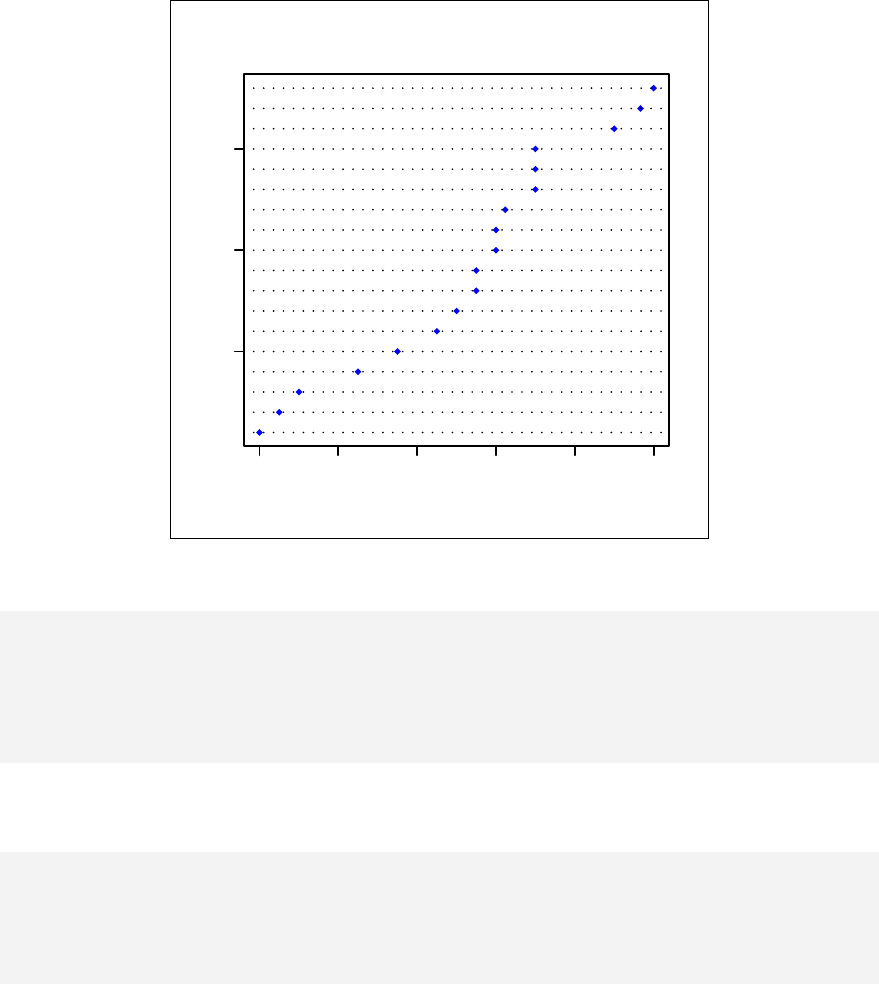

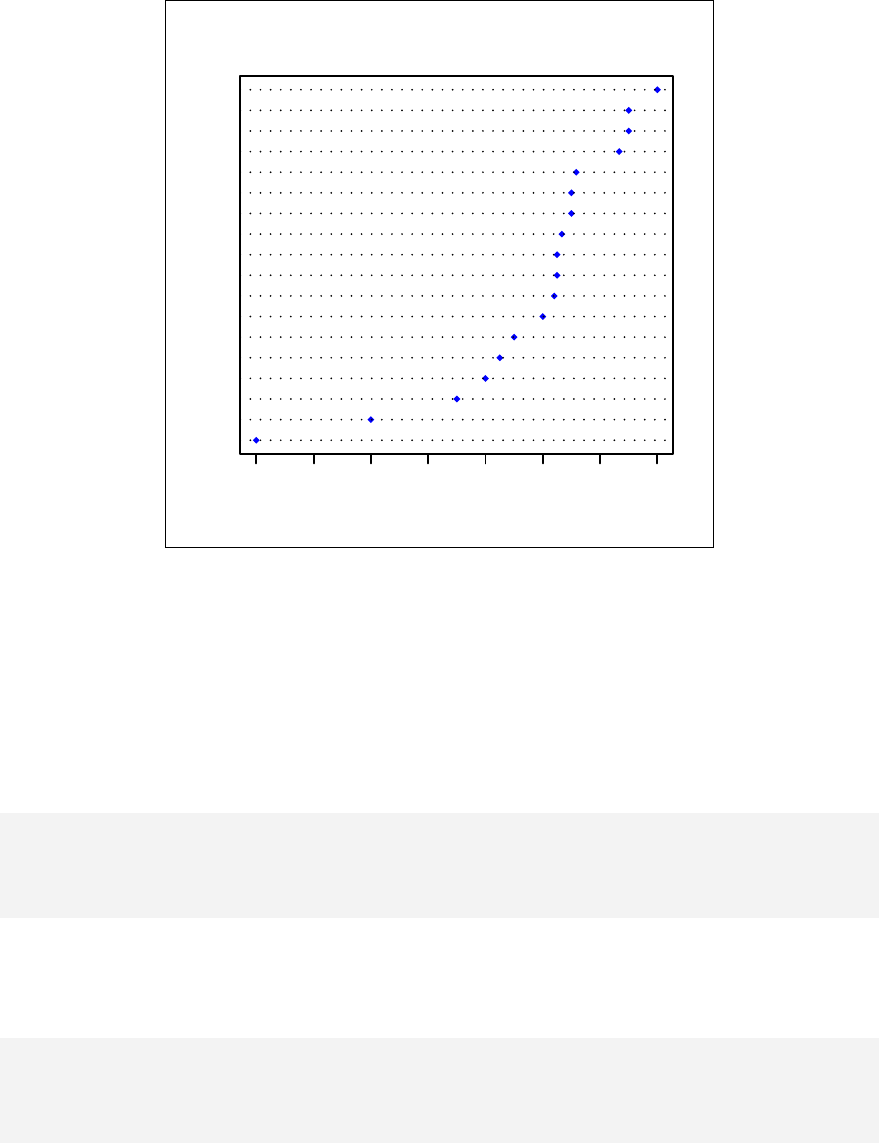

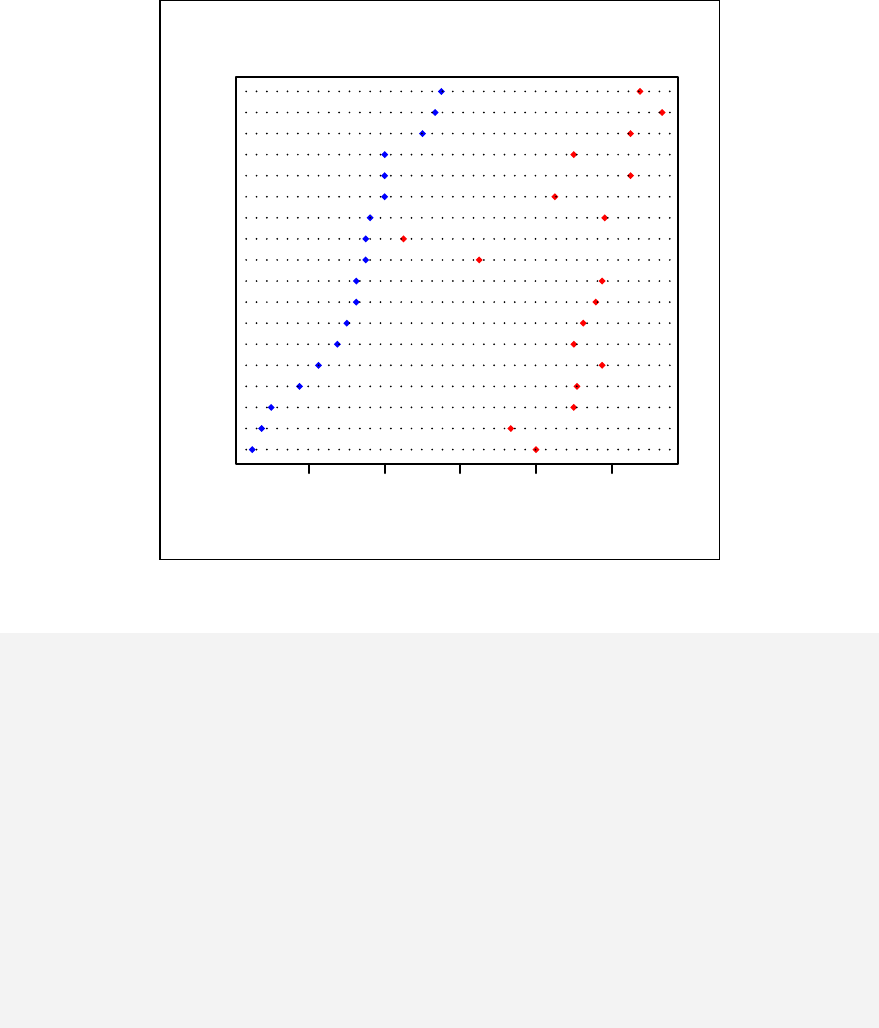

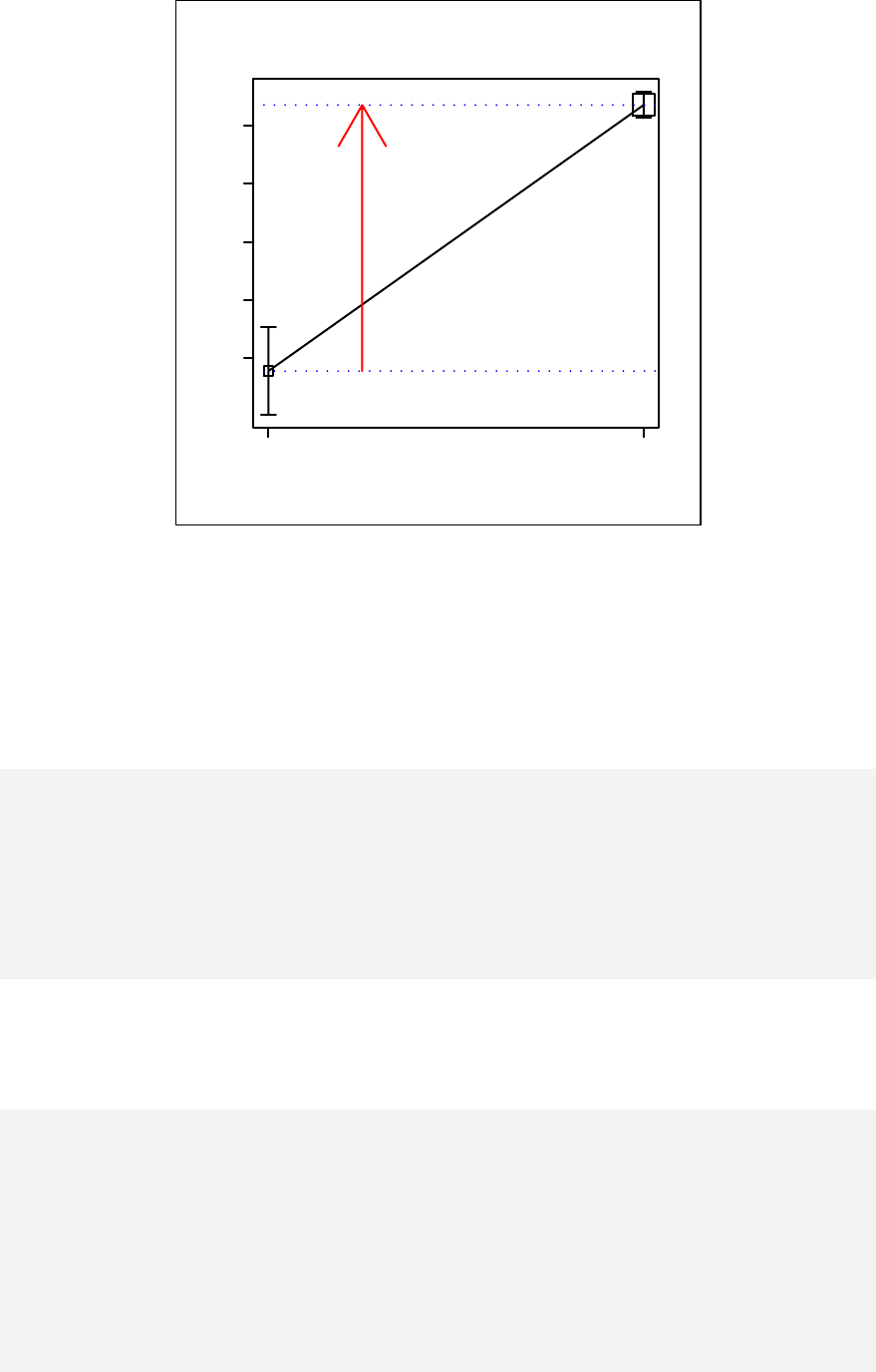

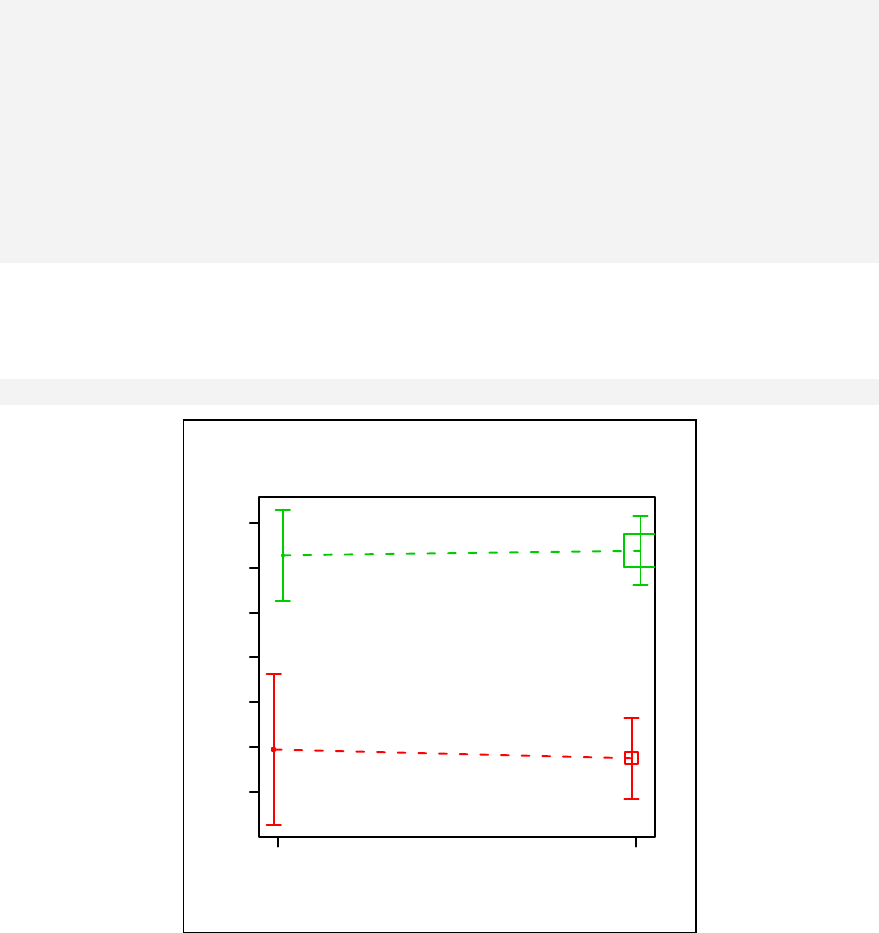

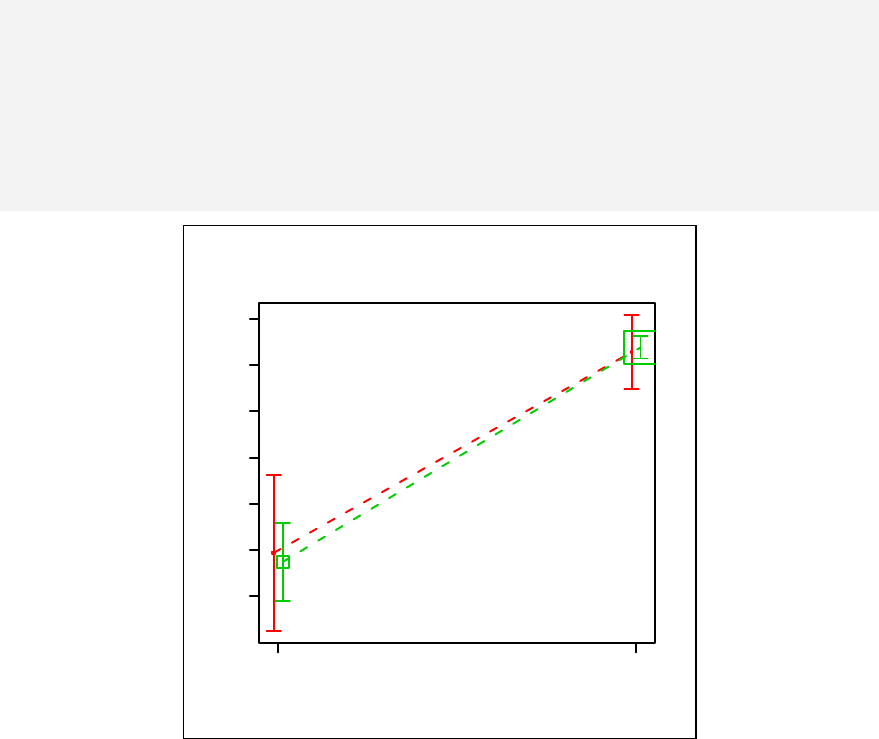

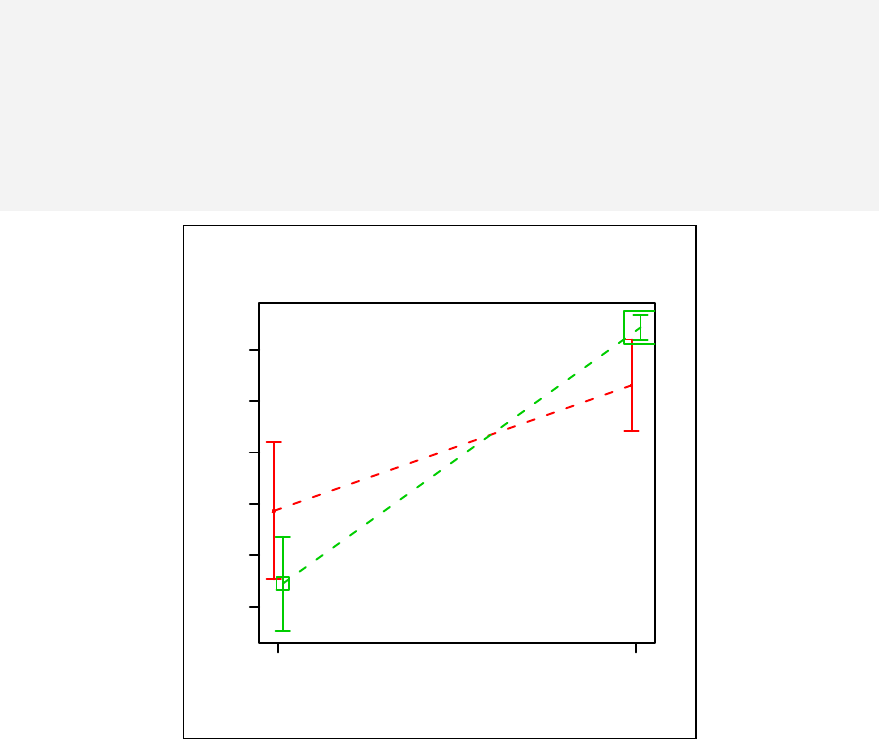

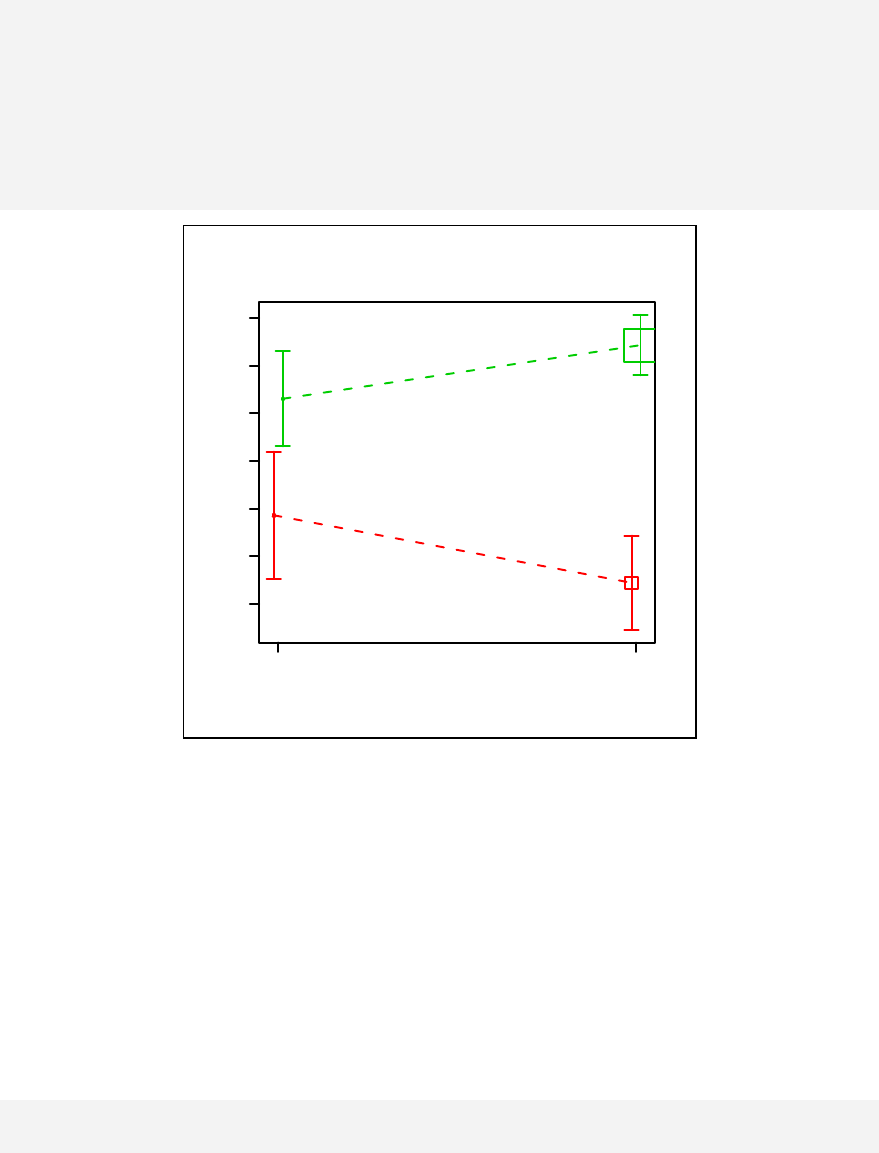

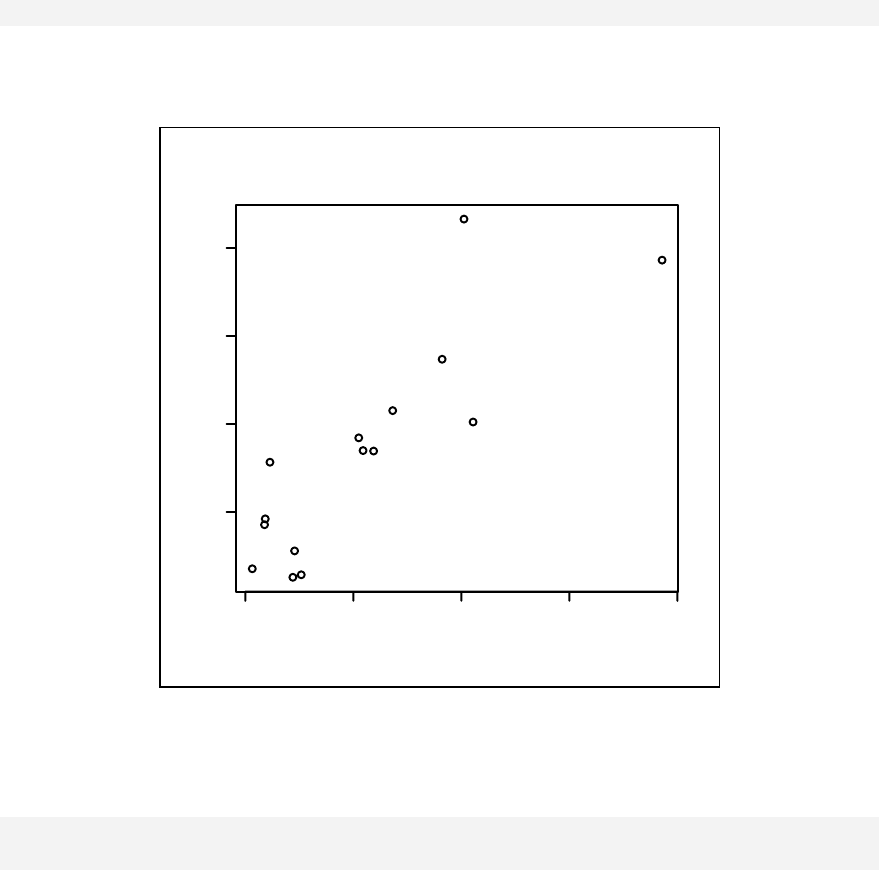

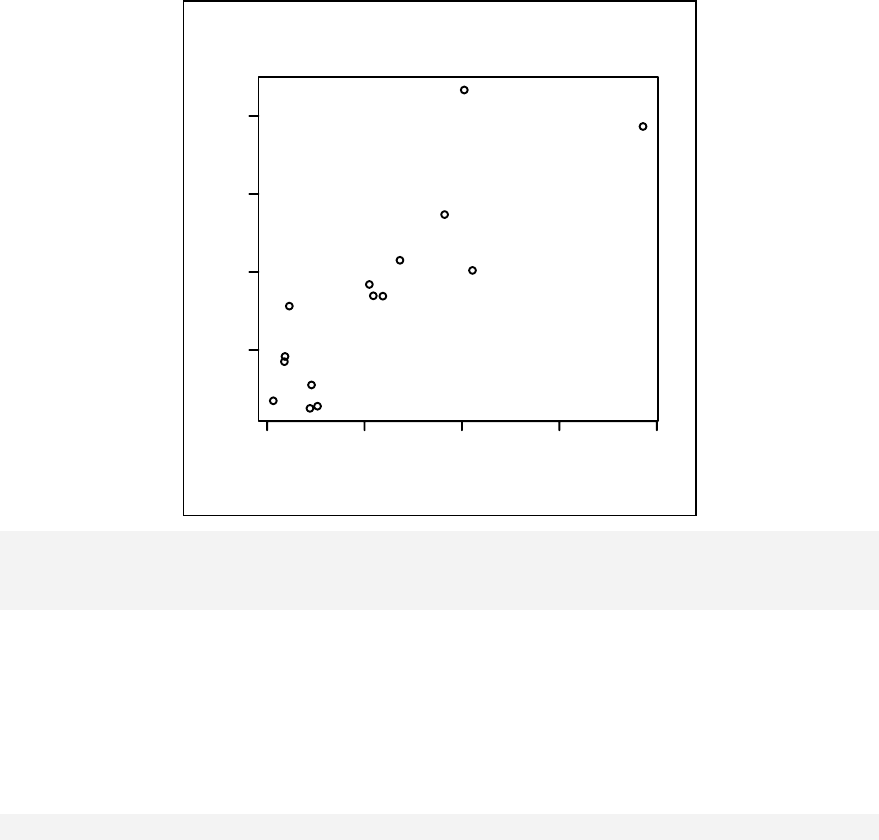

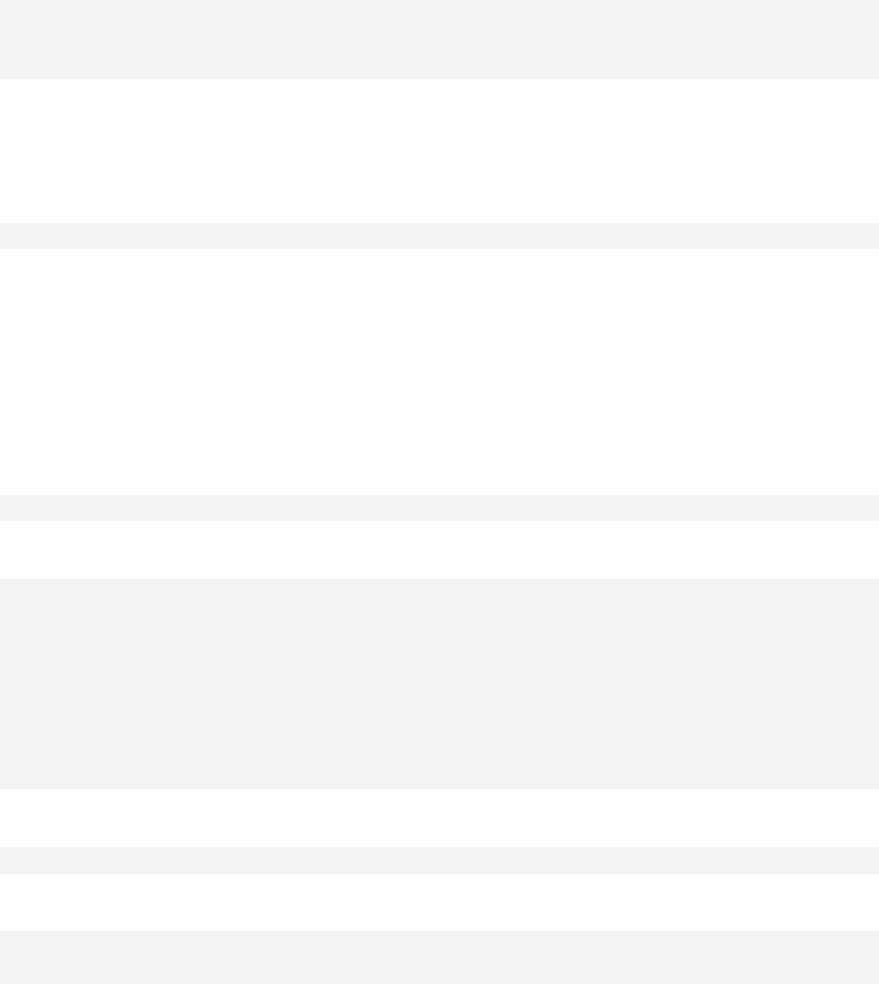

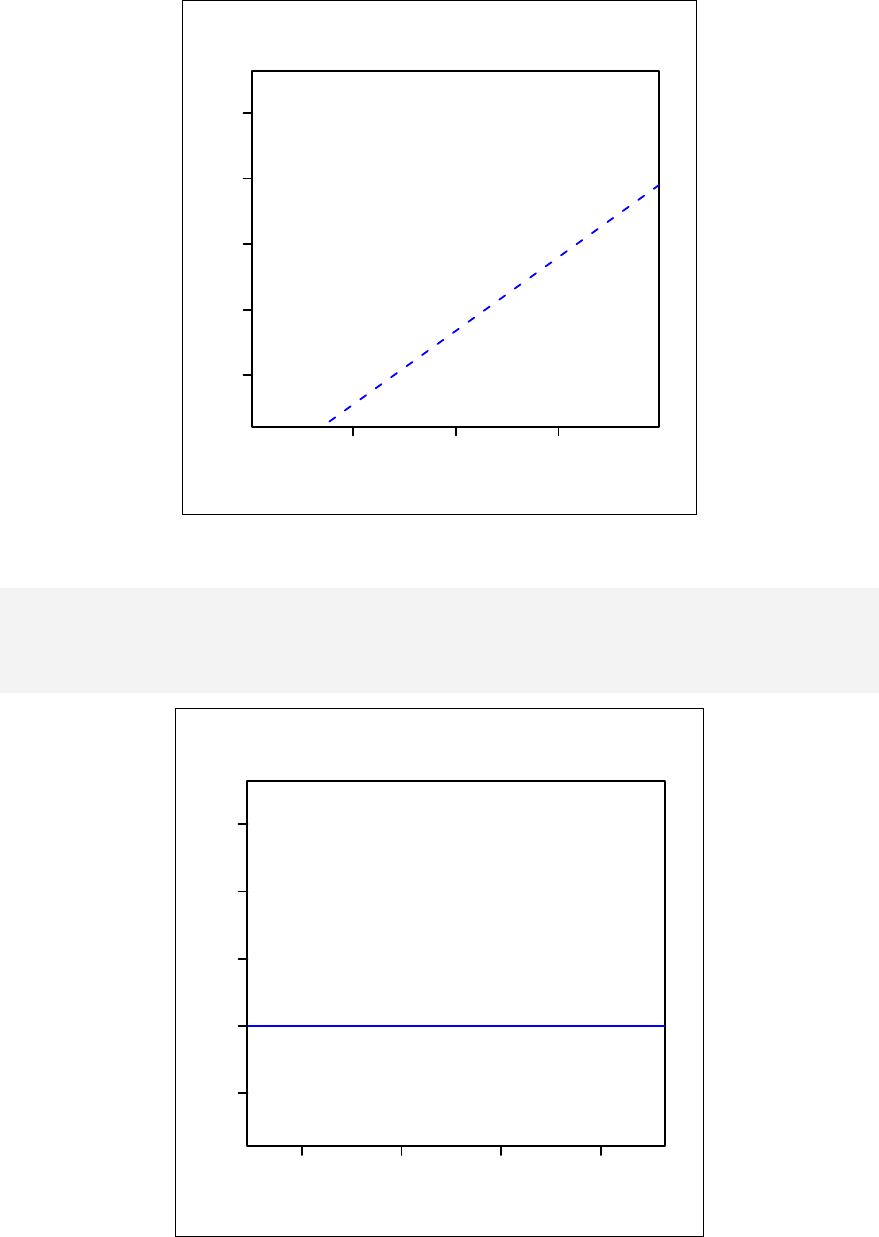

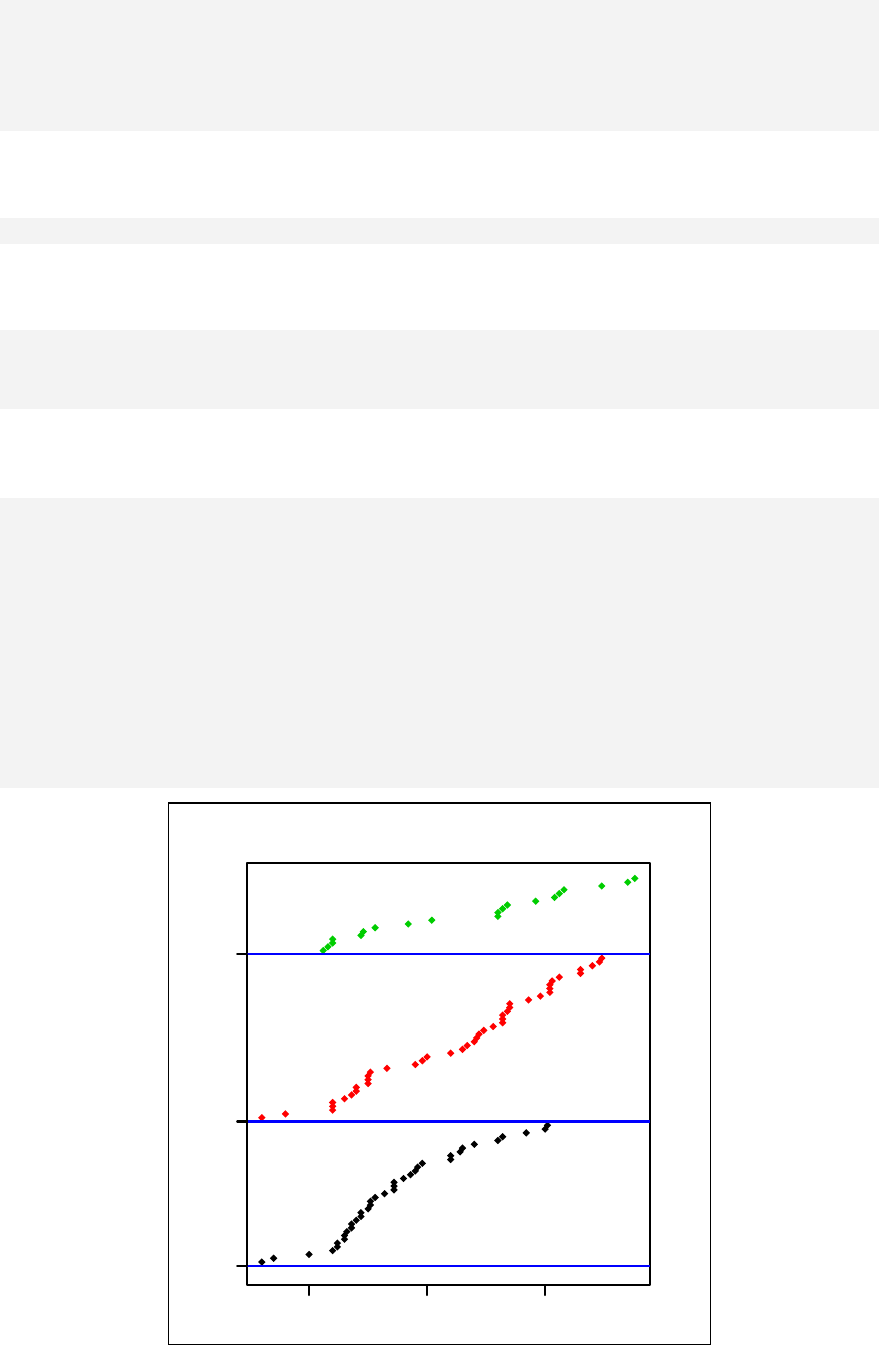

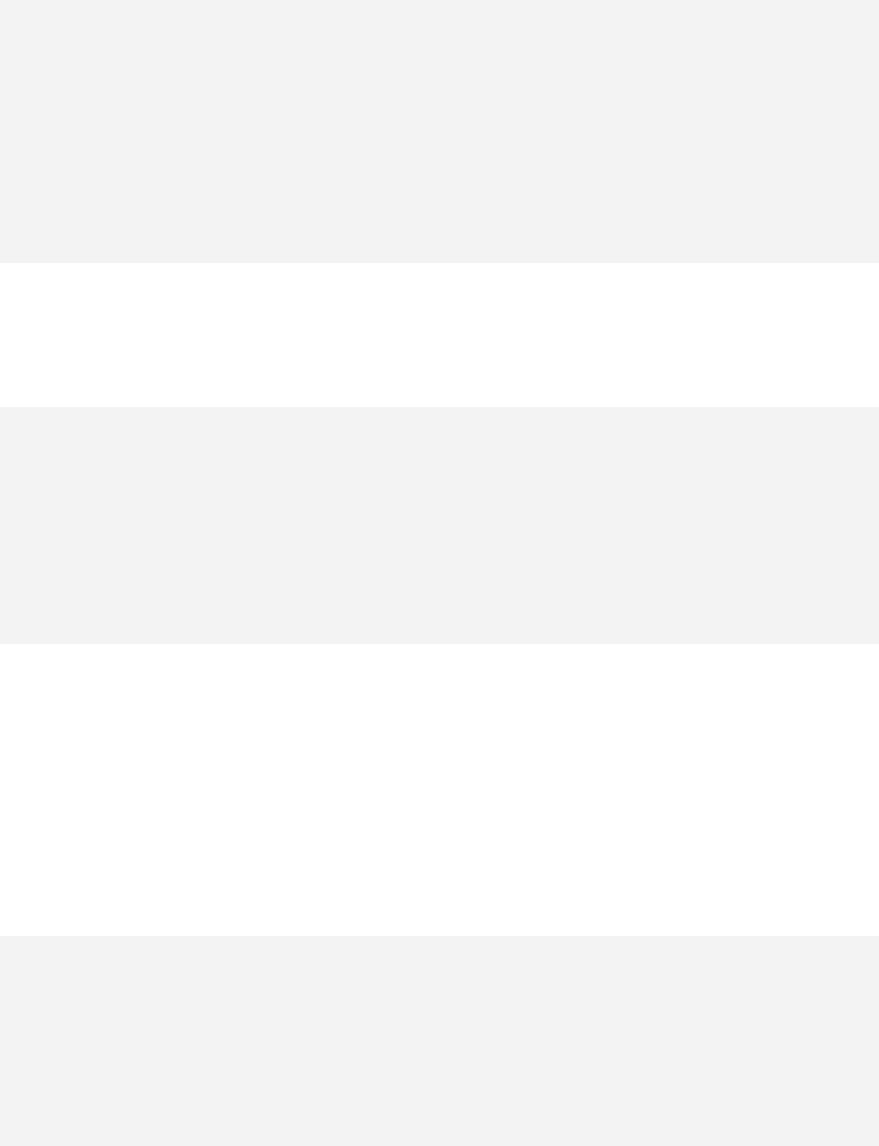

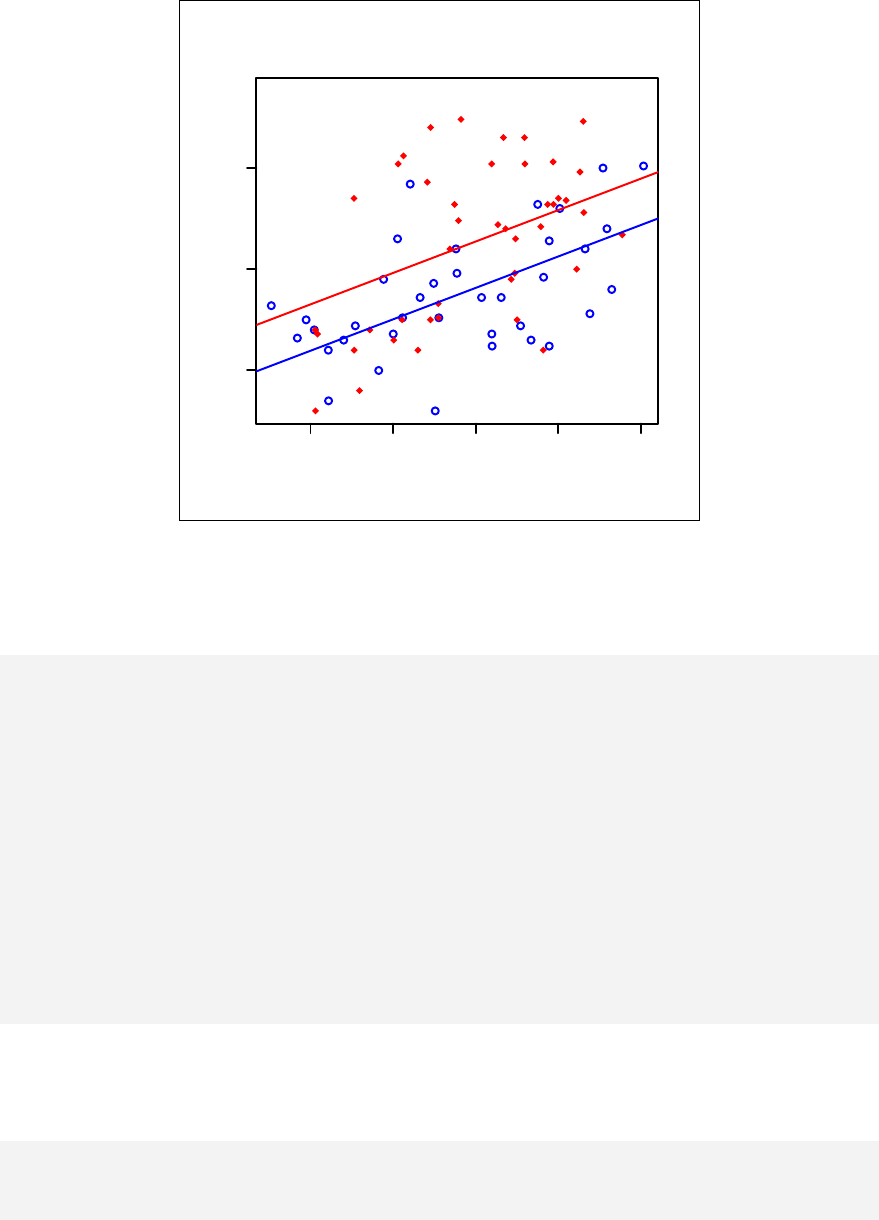

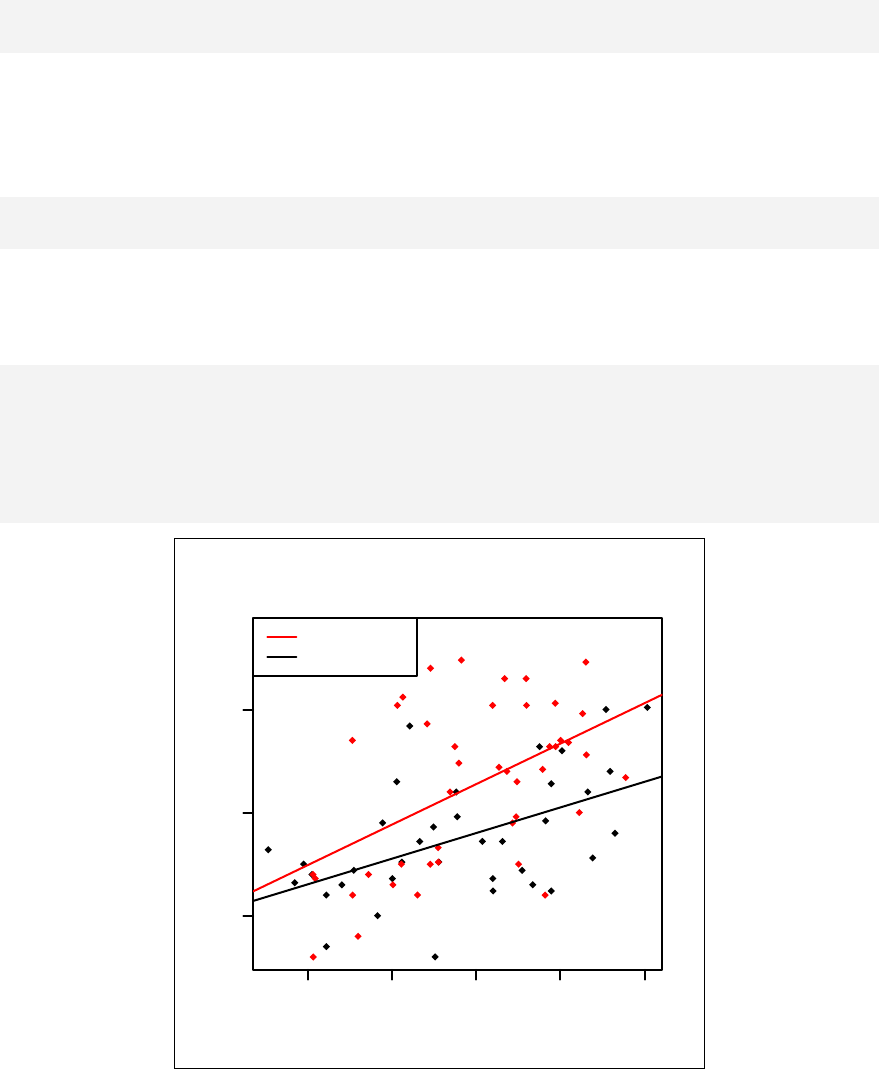

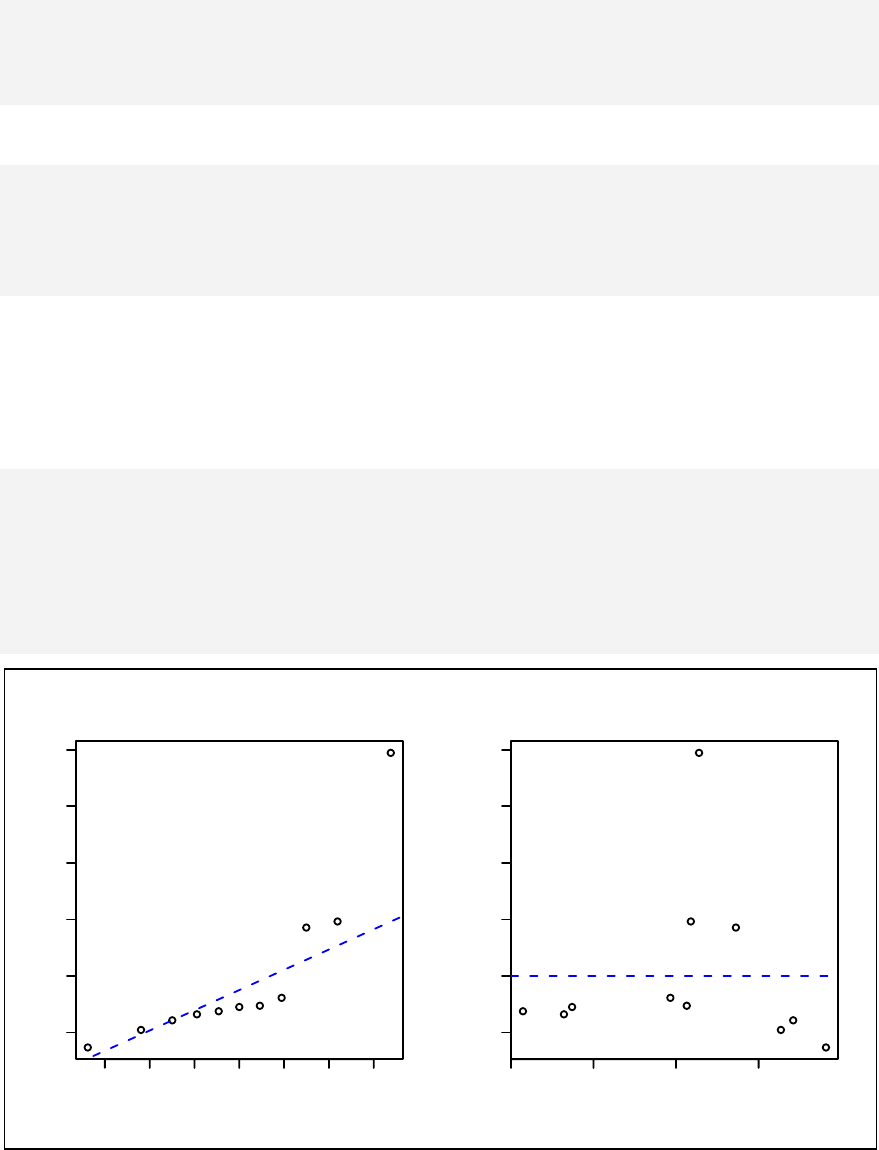

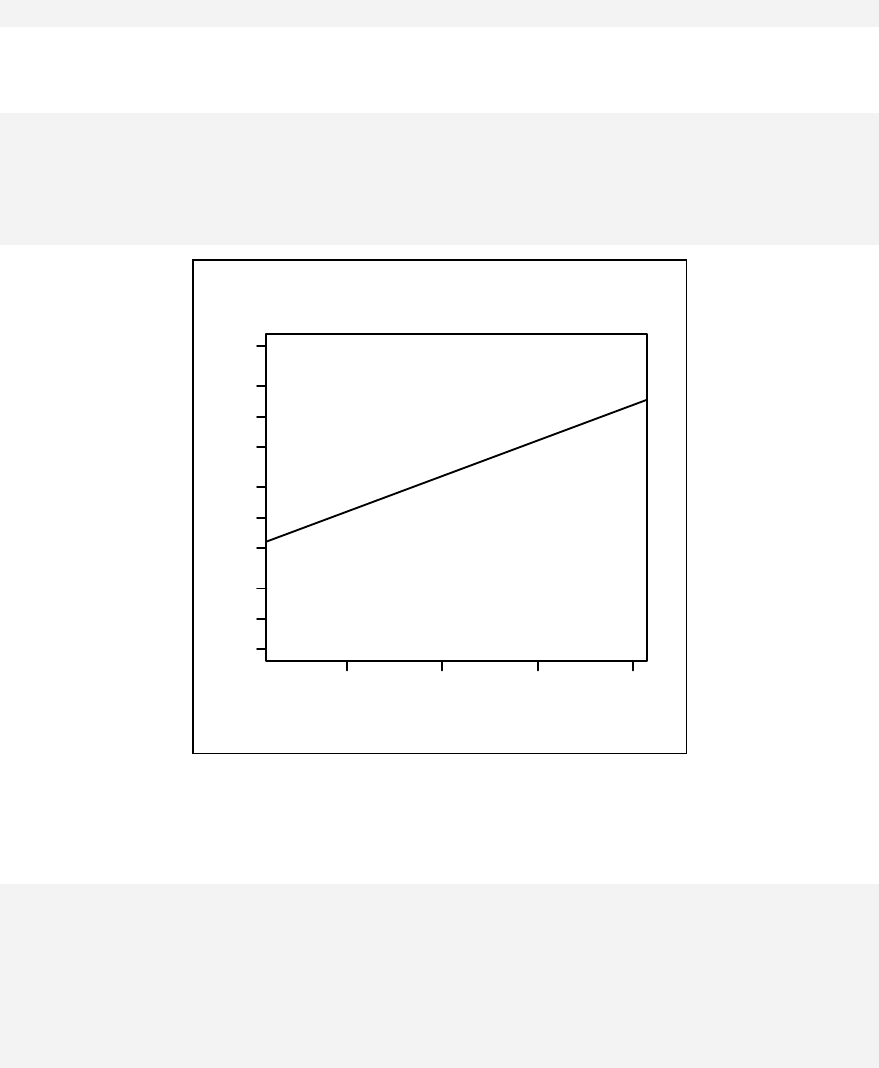

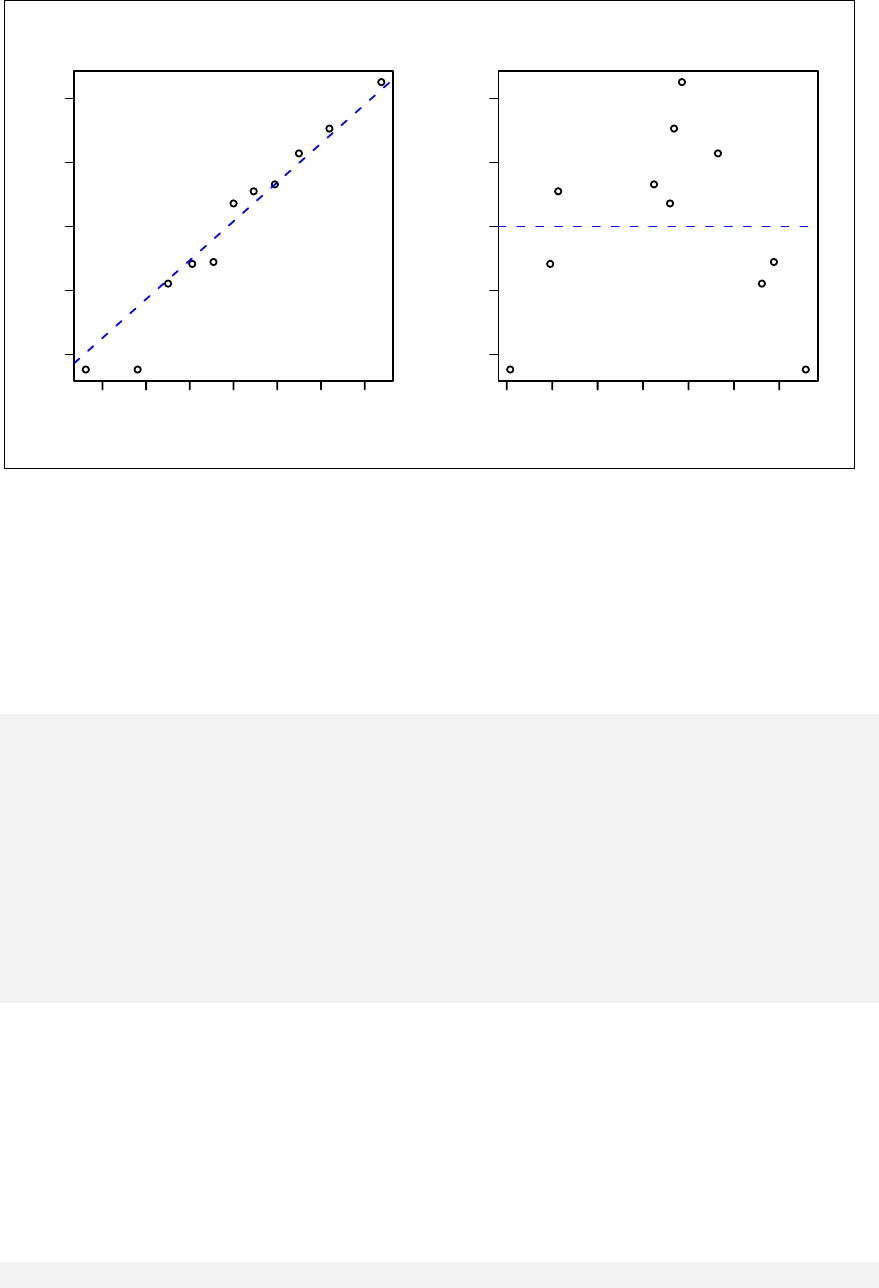

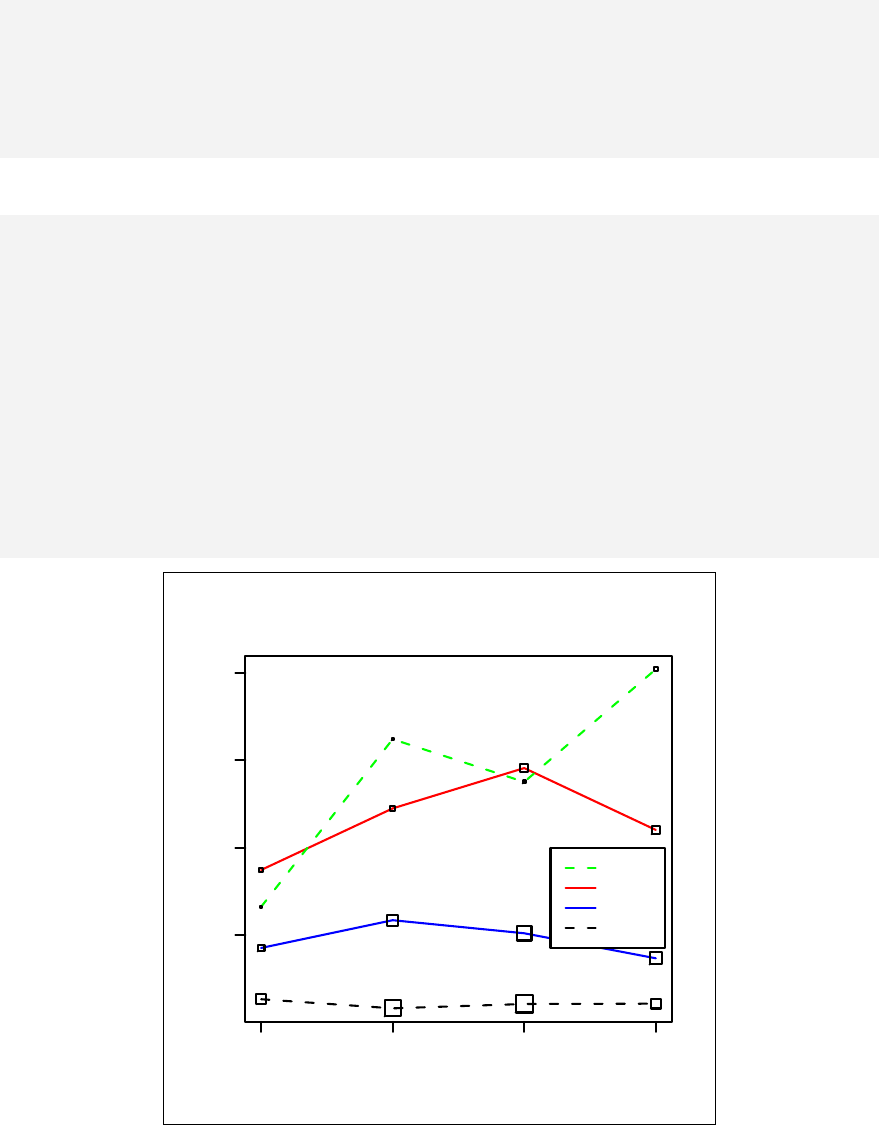

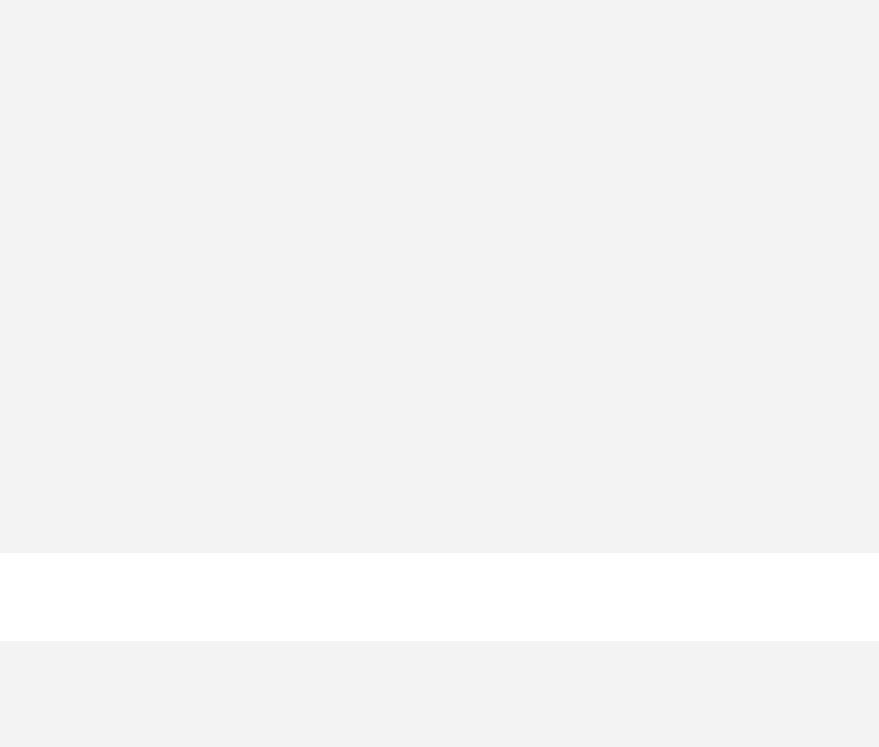

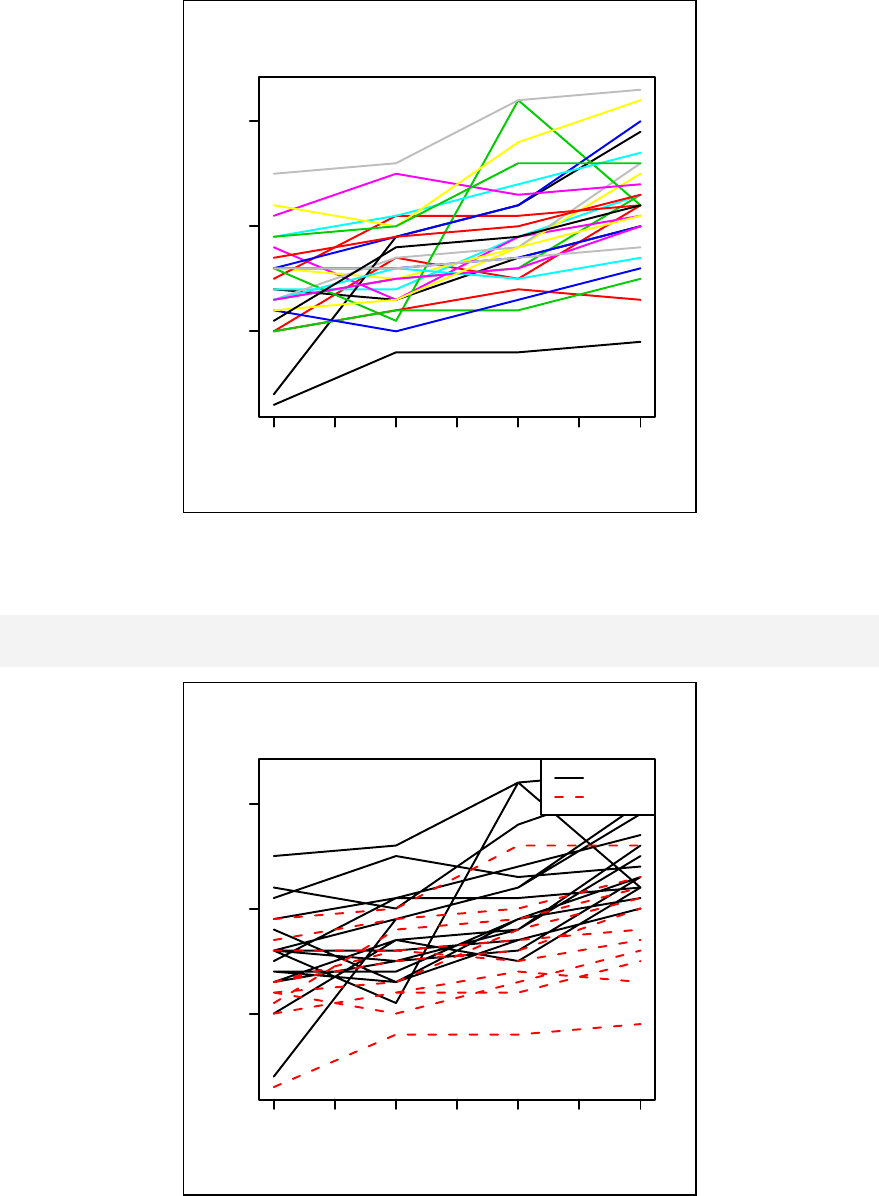

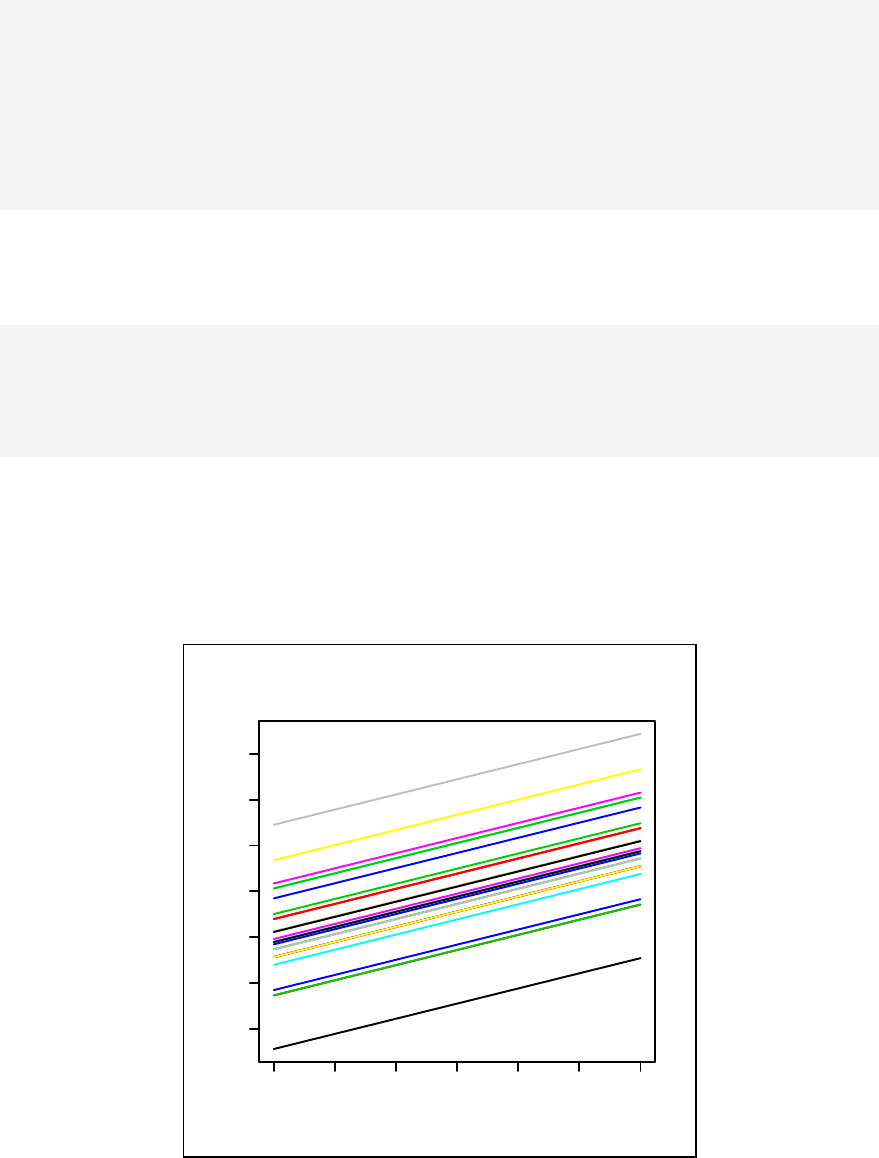

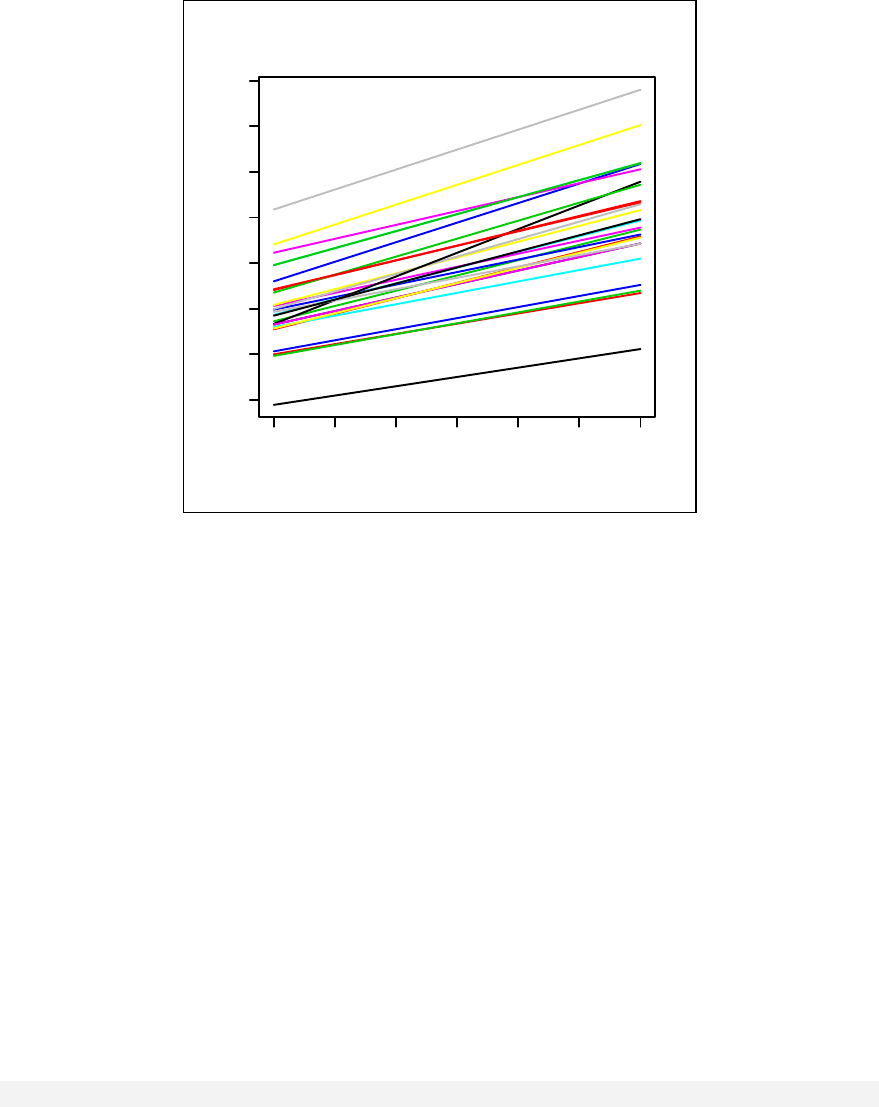

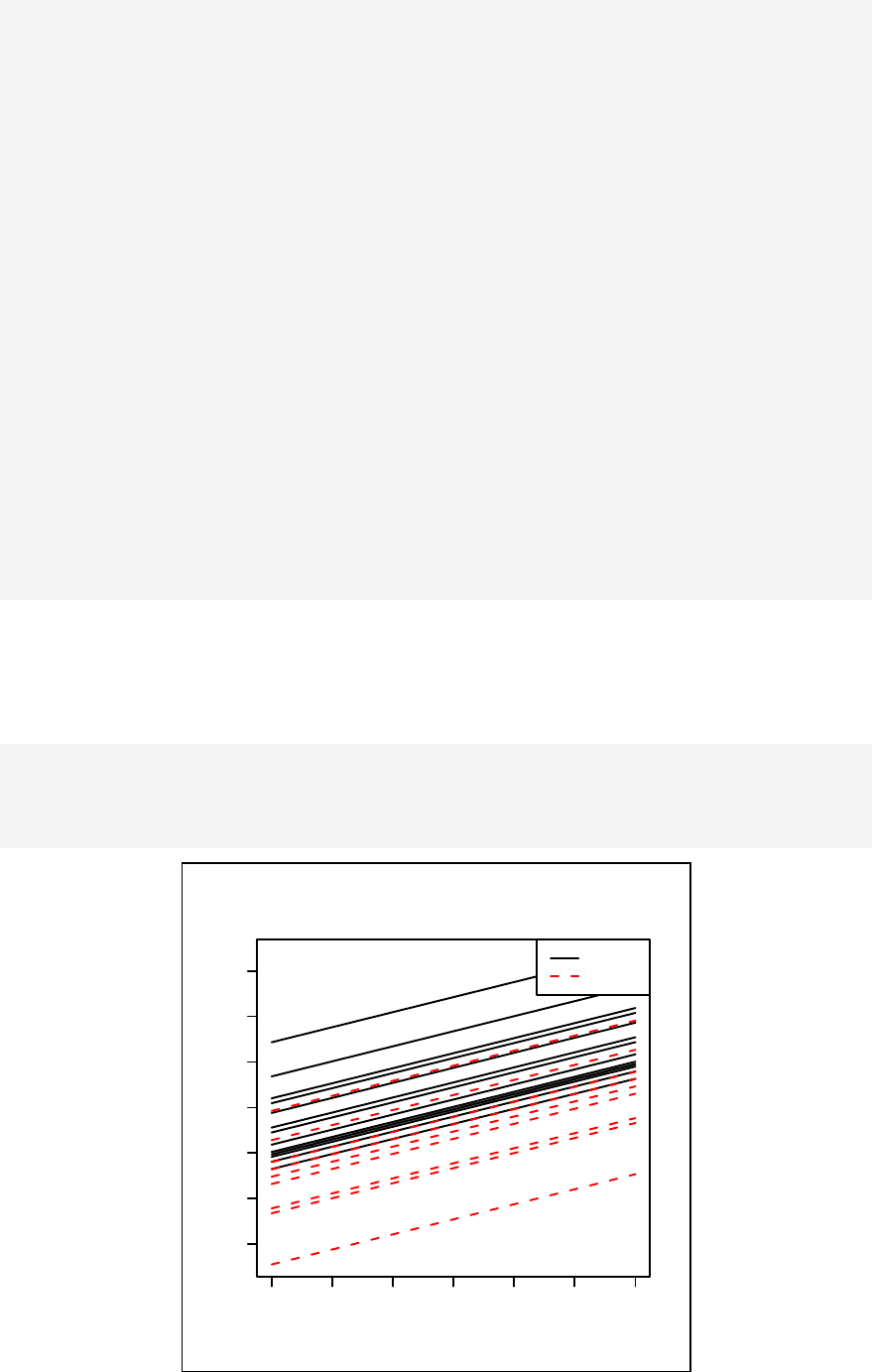

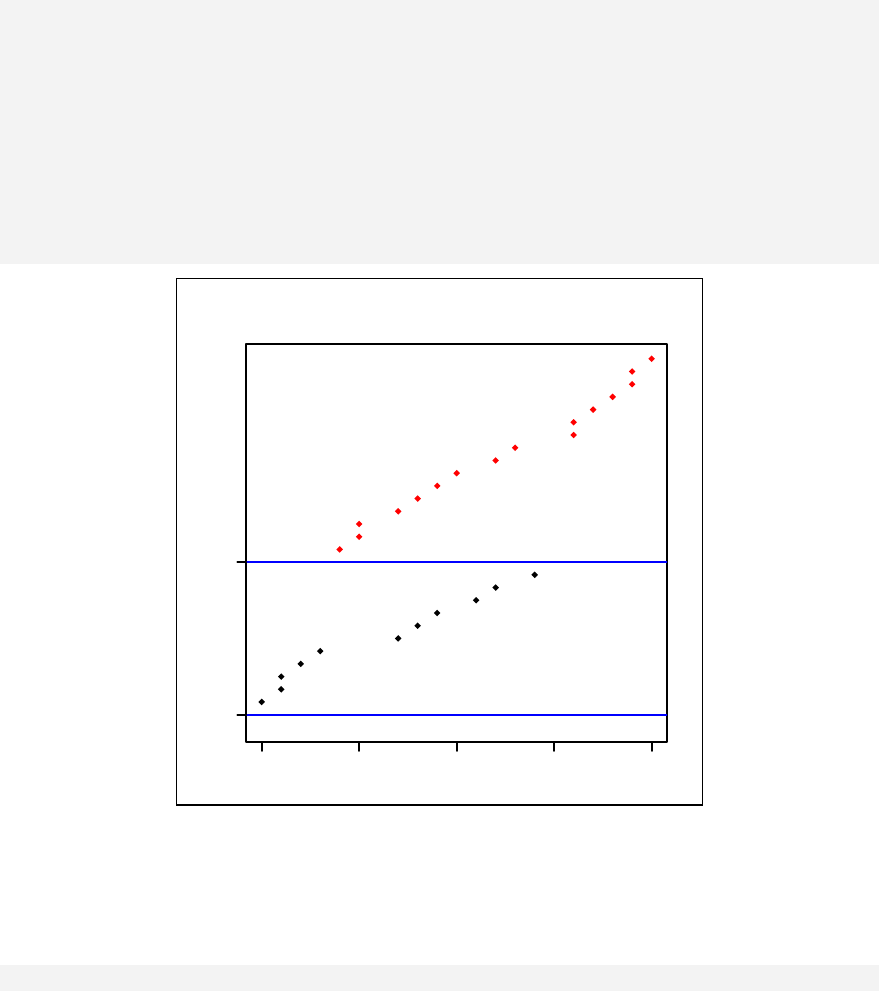

Scatter plots, simple linear regression and analysis of variance are presented in

Chapter 11. Stratified scatter plots to enhance the concept of confounding and

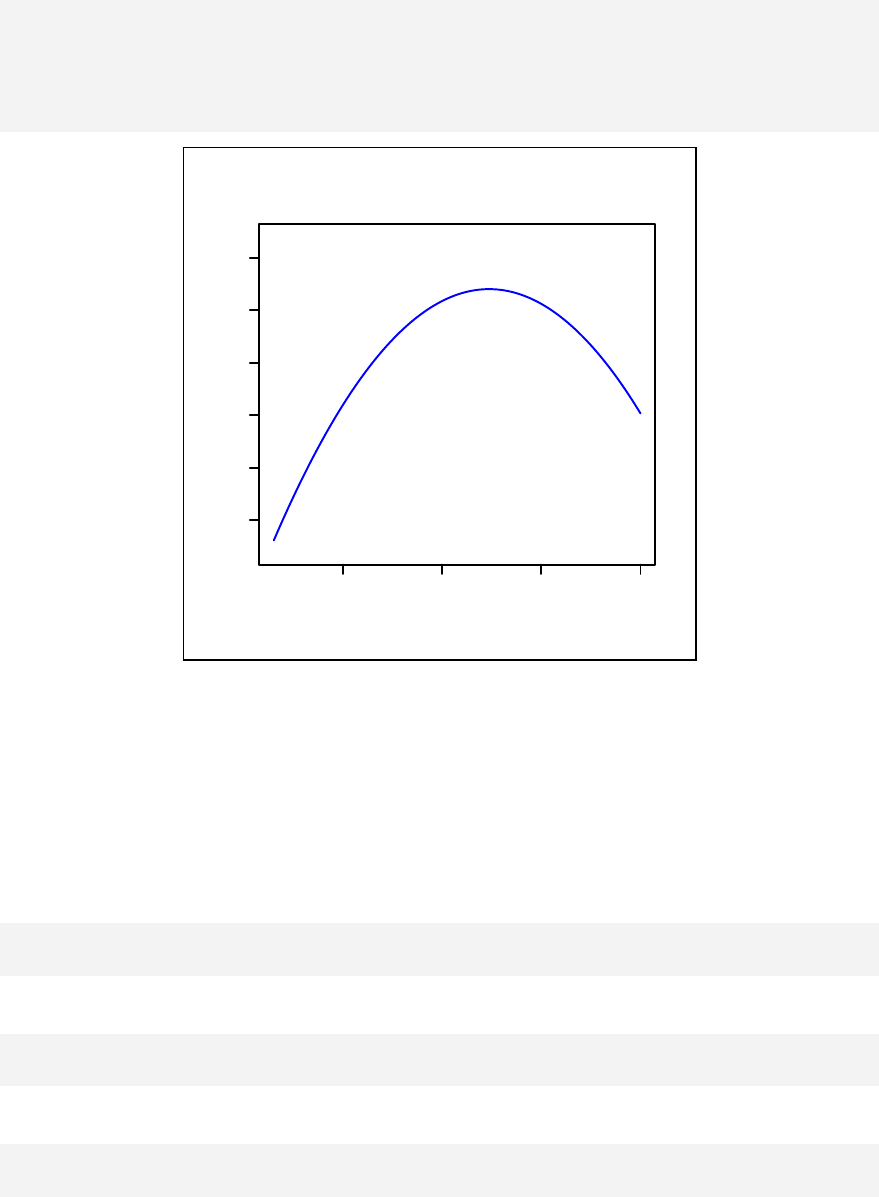

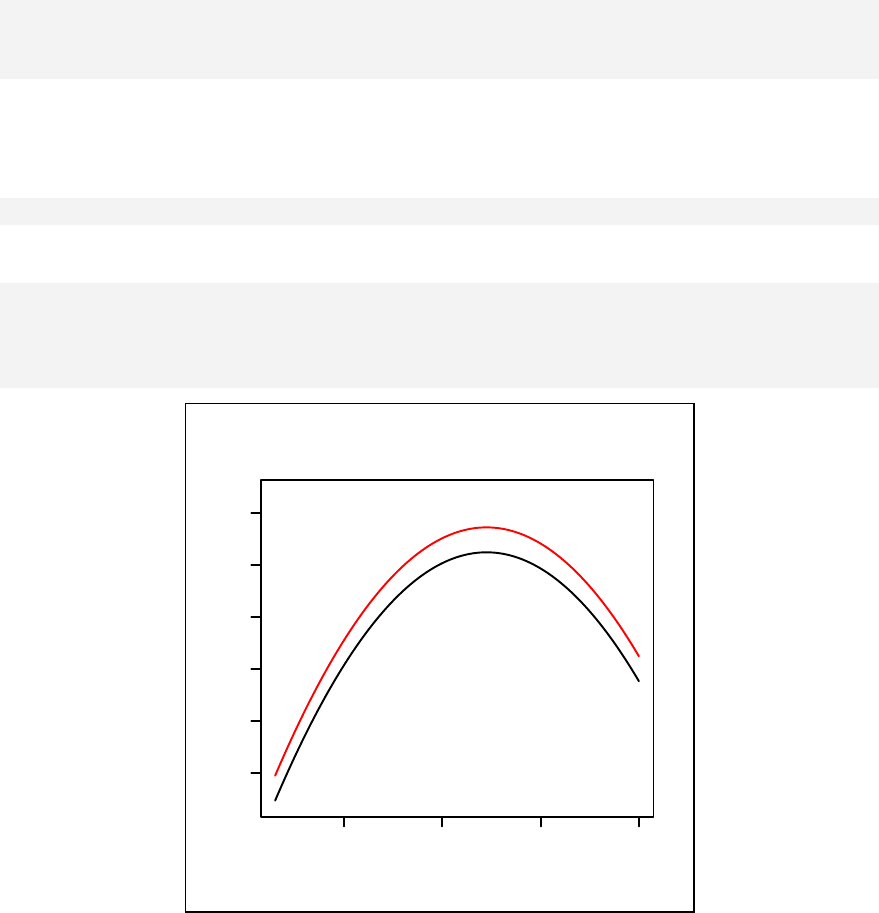

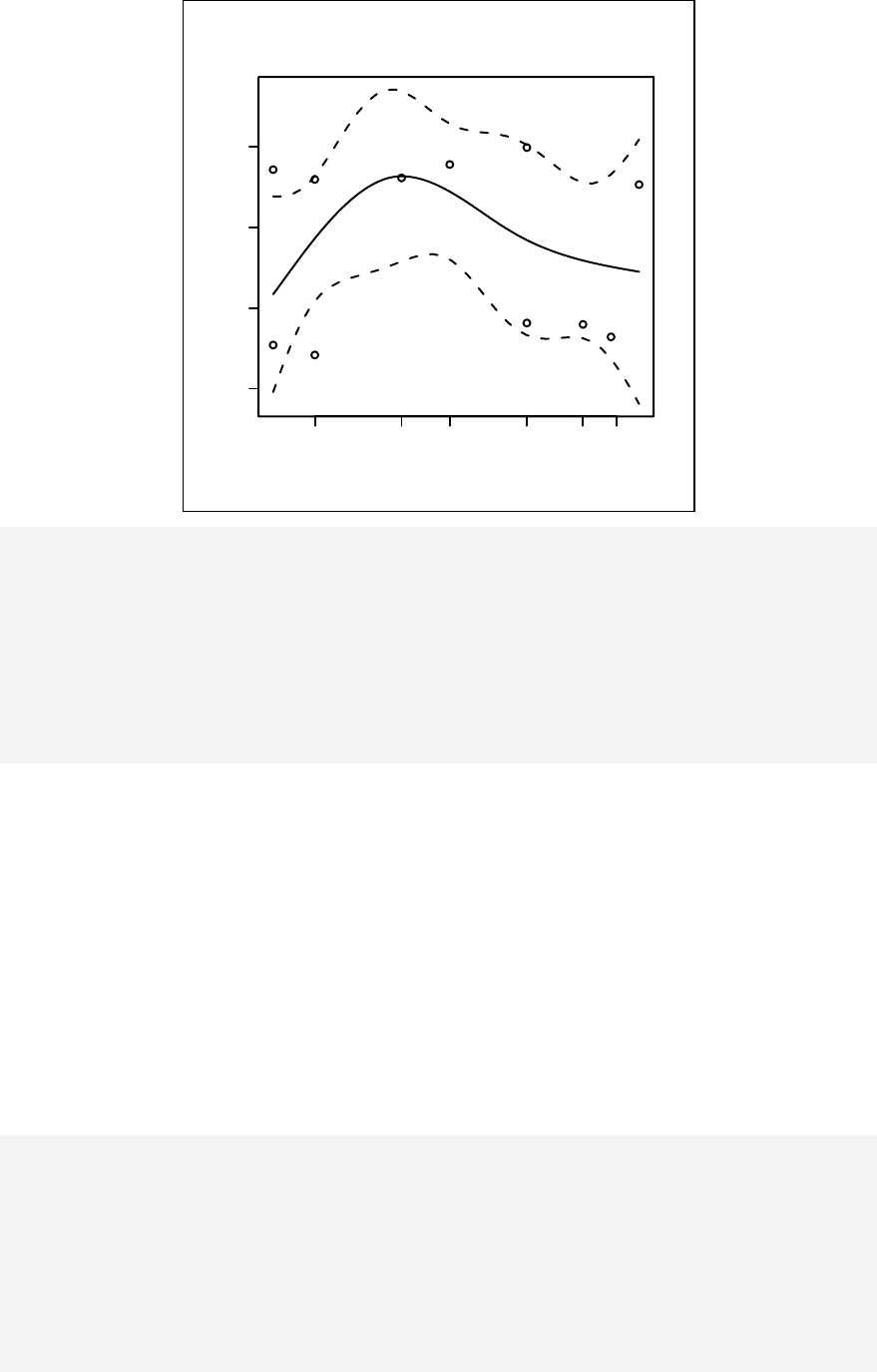

interaction for continuous outcome variables are given in Chapter 12. Curvilinear

models are discussed in Chapter 13. Linear modelling is extended to generalized

linear modelling in Chapter 14.

For binary outcome variables, Chapter 15 introduces logistic regression with

additional comparison with stratified cross-tabulation learned in Chapter 9. The

concept of a matched case control study is discussed in Chapter 16 with matched

tabulation for 1:1 and 1:n matching. Finally, conditional logistic regression is

applied. Chapter 17 introduces polytomous logistic regression using a case-control

study in which one type of case series is compared with two types of control

groups. Ordinal logistic regression is applied for ordered outcomes in Chapter 18.

iv

For a cohort study, with grouped exposure datasets, Poisson regression is used in

Chapter 19. Extra-Poisson regression for overdispersion is also discussed. This

includes modeling the outcome using the negative binomial error distribution.

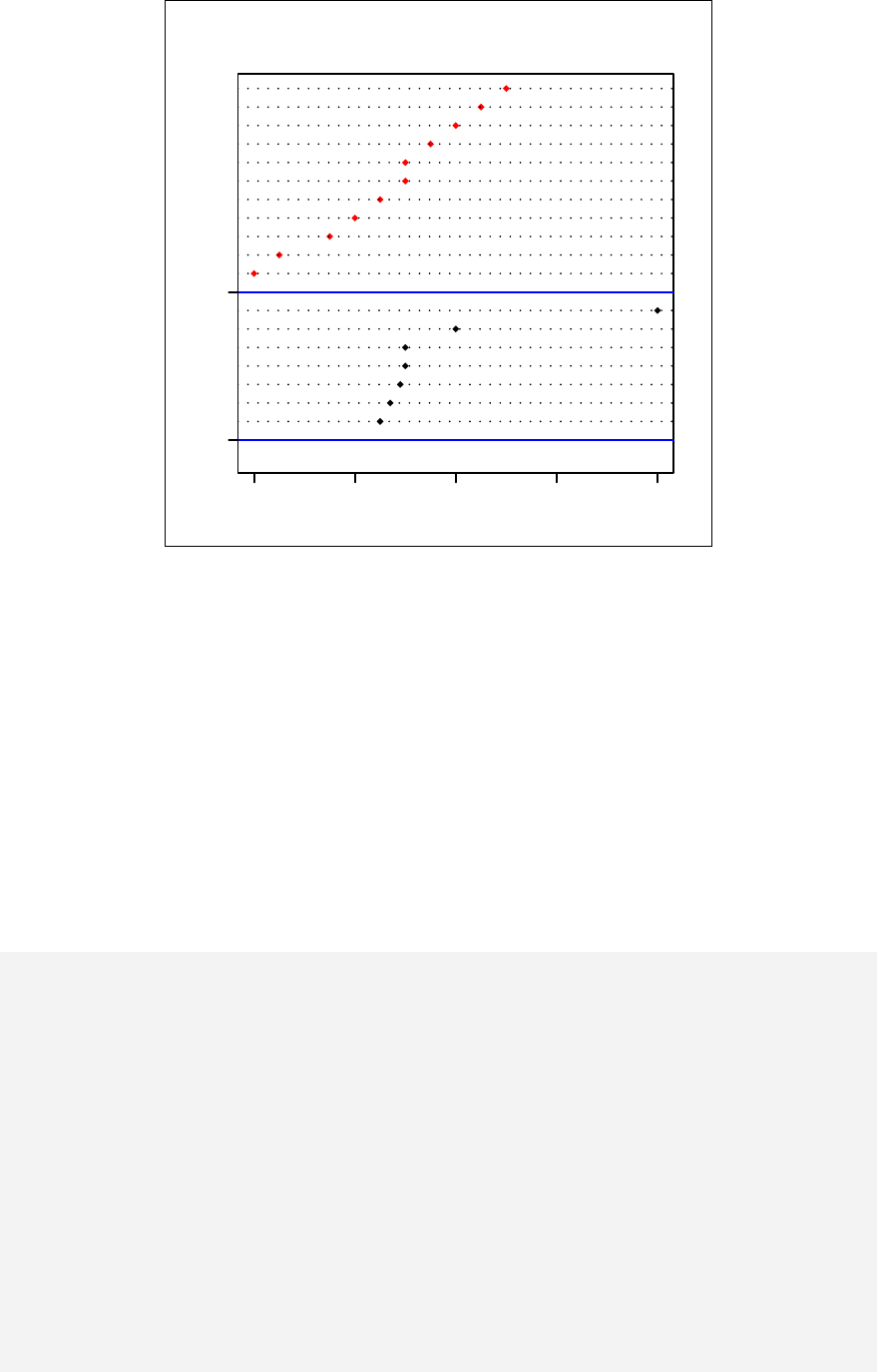

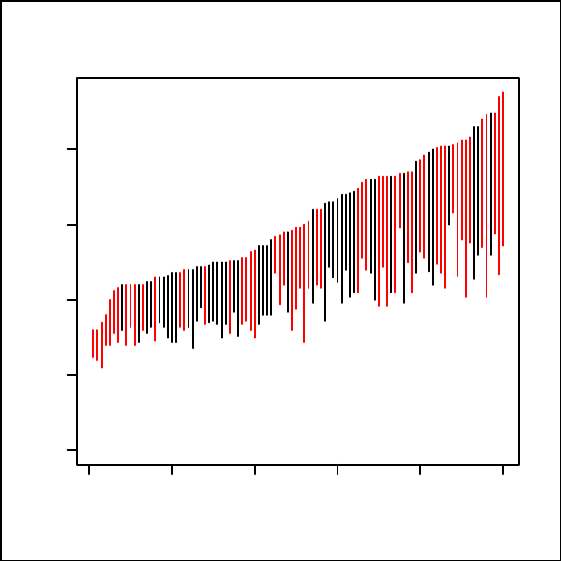

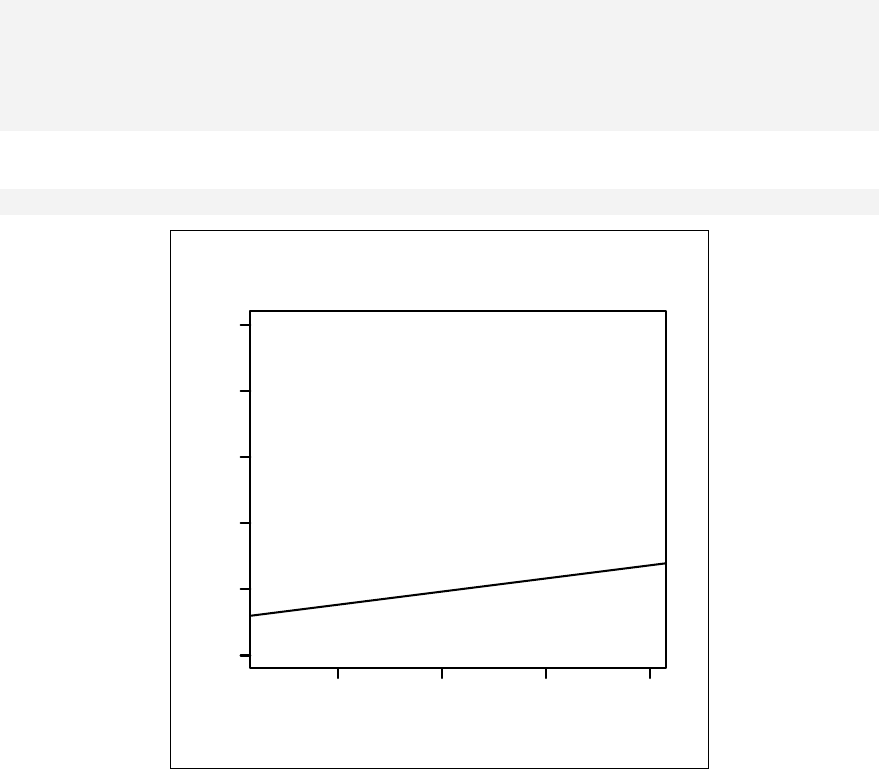

Multi-level modelling and longitudinal data analysis are discussed in Chapter 20.

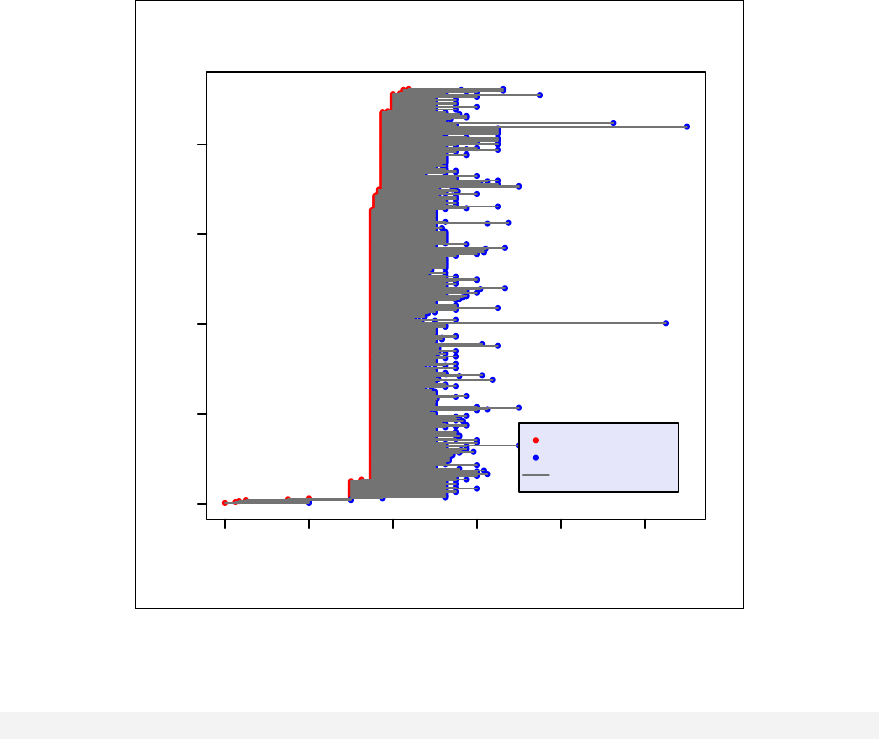

For cohort studies with individual follow-up times, survival analysis is discussed in

Chapter 21 and the Cox proportional hazard model is introduced in Chapter 22. In

chapter 23 the focus is on analyzing datasets involving attitudes, such as those

encountered in the social sciences. Chapter 24 deals with day-to-day work in

calculation of sample sizes and the technique of documentation that all professional

data analysts must master is explained in Chapter 25.

Some suggested strategies for handling large datasets are given in chapter 26. The

book ends with a demonstration of the tableStack command, which dramatically

shortens the preparation of a tidy stack of tables with a special technique of copy

and paste into a manuscript.

At the end of each chapter some references are given for further reading. Most

chapters also end with some exercises to practice on. Solutions to these are given at

the end of the book.

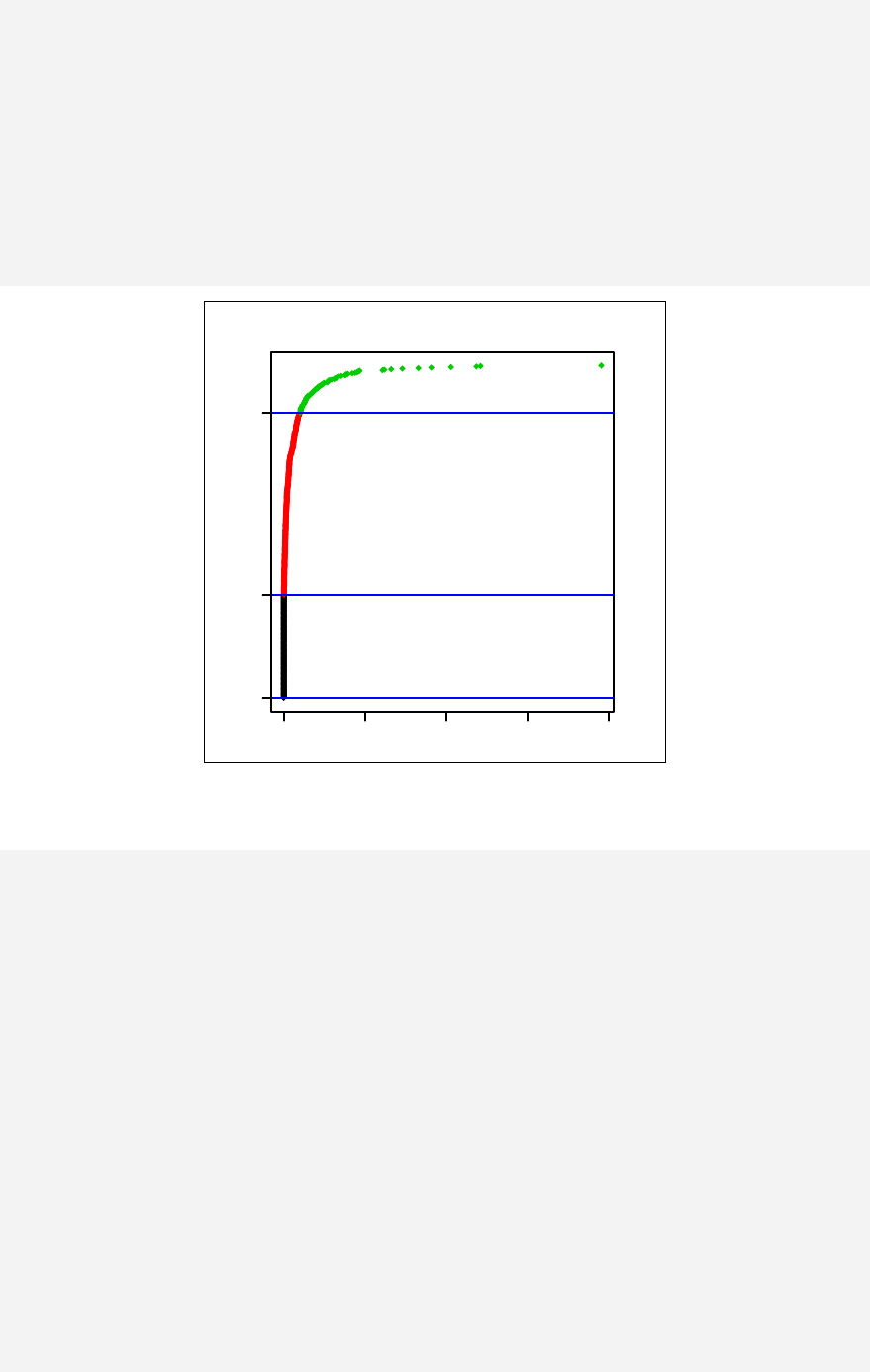

Colour

It is assumed that the readers of this book will simultaneously practice the

commands and see the results on the screen. The explanations in the text sometimes

describe the colour of graphs that appear in black and white in this book (the reason

for this is purely for reducing the printing costs). The electronic copy of the book,

however, does include colour.

Explanations of fonts used in this book

MASS An R package or library

Attitudes An R dataset

plot An R function

summ An Epicalc function (italic)

'abc' An R object

'pch' An argument to a function

'saltegg' A variable within a data frame

"data.txt" A data file on disk

v

Table of Contents

Chapter 1: Starting to use R __________________________________________ 1

Installation _____________________________________________________ 1

Text Editors ____________________________________________________ 3

Starting R Program_______________________________________________ 3

R libraries & packages ____________________________________________ 4

On-line help ____________________________________________________ 7

Using R _______________________________________________________ 8

Exercises _____________________________________________________ 14

Chapter 2: Vectors ________________________________________________ 15

Concatenation__________________________________________________ 16

Subsetting a vector with an index vector _____________________________ 17

Missing values _________________________________________________ 22

Exercises _____________________________________________________ 24

Chapter 3: Arrays, Matrices and Tables________________________________ 25

Arrays________________________________________________________ 25

Matrices ______________________________________________________ 29

Tables________________________________________________________ 29

Lists _________________________________________________________ 31

Exercises _____________________________________________________ 33

Chapter 4: Data Frames____________________________________________ 35

Data entry and analysis __________________________________________ 37

Datasets included in Epicalc ______________________________________ 38

Reading in data_________________________________________________ 38

Attaching the data frame to the search path ___________________________ 43

The 'use' command in Epicalc _____________________________________ 45

Exercises______________________________________________________ 48

vi

Chapter 5: Simple Data Exploration __________________________________ 49

Data exploration using Epicalc ____________________________________ 49

Exercise_______________________________________________________ 62

Chapter 6: Date and Time __________________________________________ 63

Computation functions related to date _______________________________ 63

Reading in a date variable ________________________________________ 66

Dealing with time variables _______________________________________ 67

Exercises______________________________________________________ 76

Chapter 7: An Outbreak Investigation: Describing Time ___________________ 77

Case definition _________________________________________________ 78

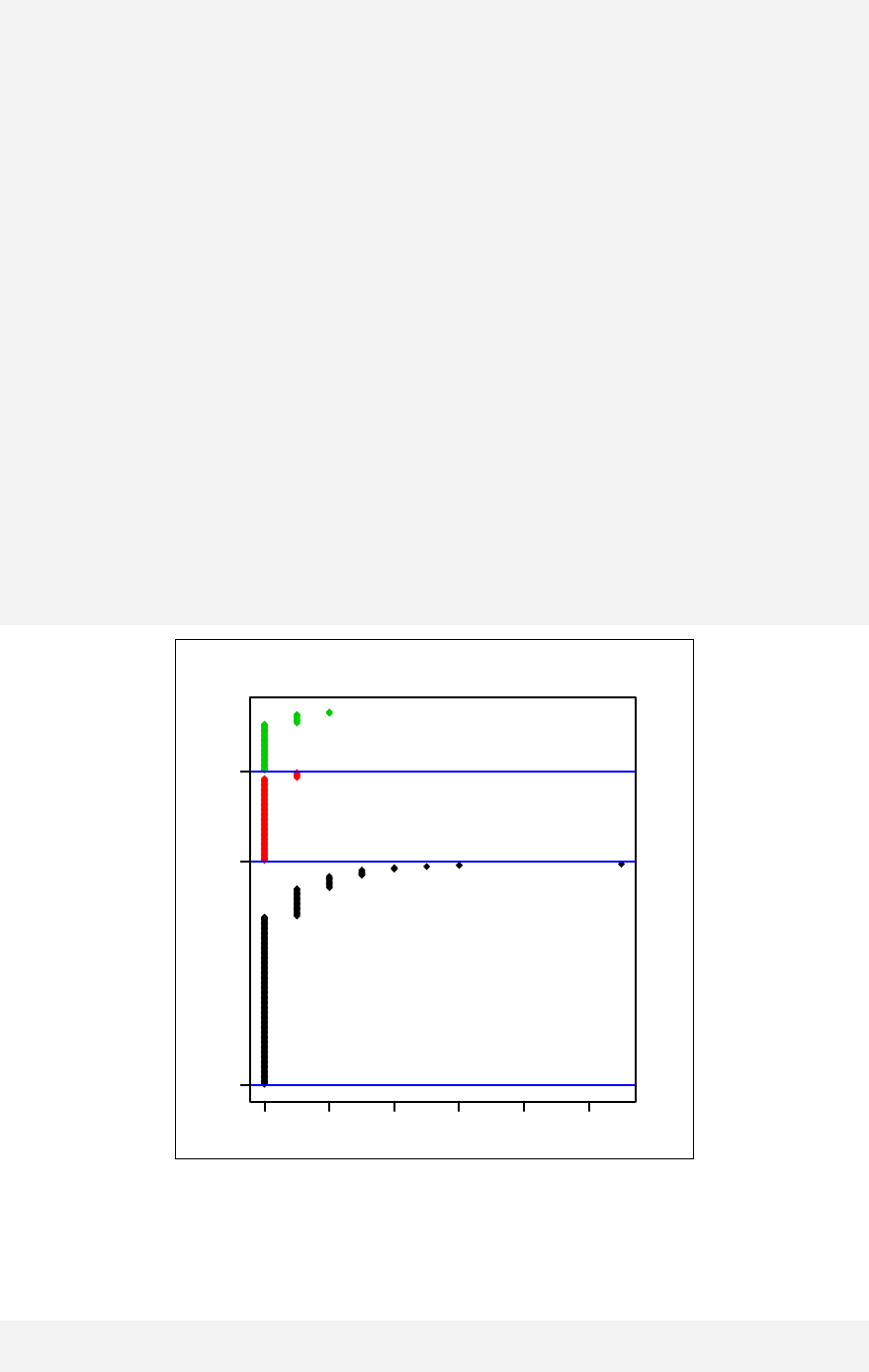

Paired plot ____________________________________________________ 83

Exercise_______________________________________________________ 86

Chapter 8: An Outbreak Investigation: Risk Assessment ___________________ 87

Recoding missing values _________________________________________ 87

Exploration of age and sex________________________________________ 90

Comparison of risk: Risk ratio and attributable risk ____________________ 92

Dose-response relationship _______________________________________ 94

Exercise_______________________________________________________ 96

Chapter 9: Odds Ratios, Confounding and Interaction ____________________ 97

Odds and odds ratio _____________________________________________ 97

Confounding and its mechanism ___________________________________ 99

Interaction and effect modification ________________________________ 103

Exercise______________________________________________________ 104

Chapter 10: Basic Data Management ________________________________ 105

Data cleaning _________________________________________________ 105

Identifying duplication ID _______________________________________ 105

Missing values ________________________________________________ 107

Recoding values using Epicalc____________________________________ 110

vii

Labelling variables with 'label.var'_________________________________ 112

Adding a variable to a data frame _________________________________ 115

Collapsing categories ___________________________________________ 118

Exercises_____________________________________________________ 120

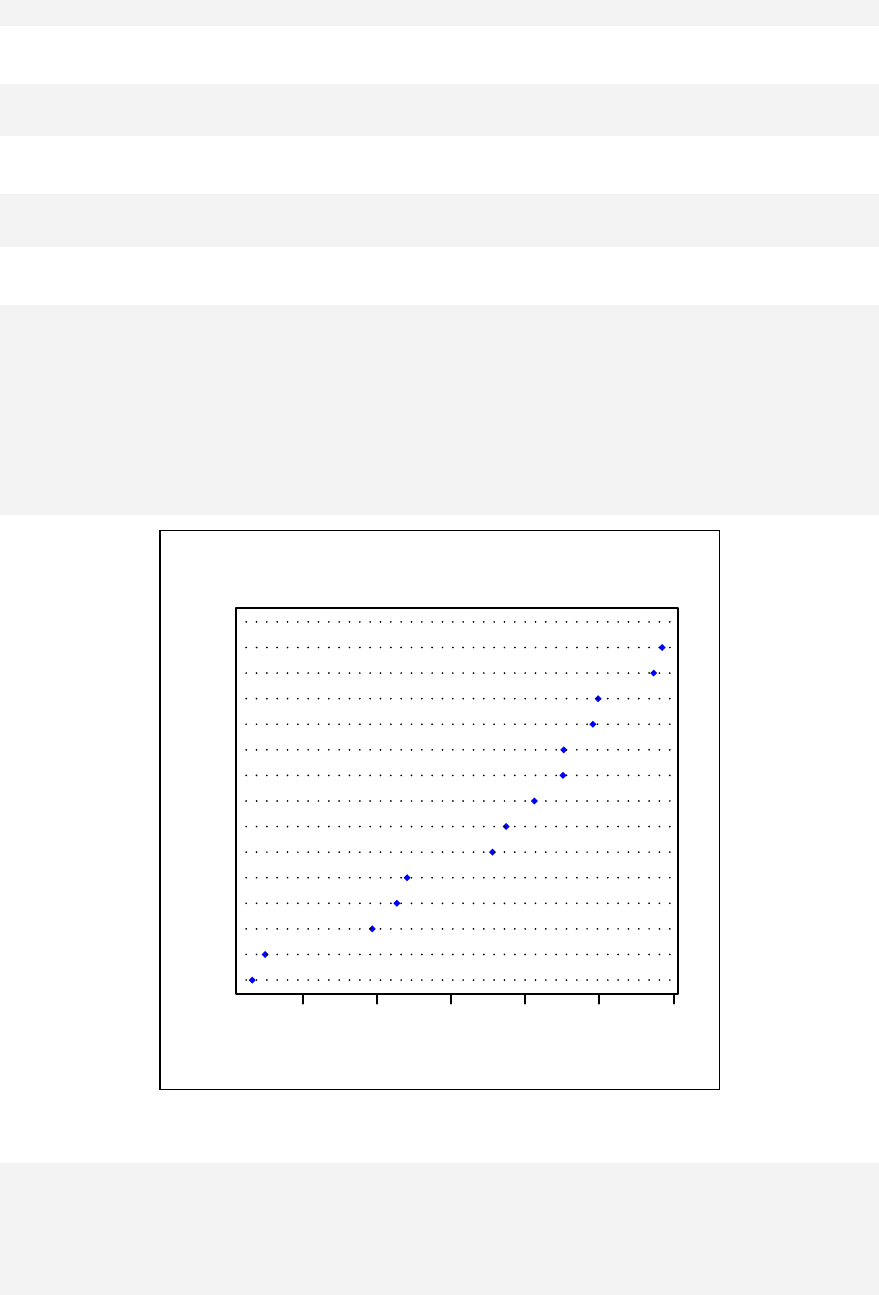

Chapter 11: Scatter Plots & Linear Regression _________________________ 121

Scatter plots __________________________________________________ 122

Components of a linear model ____________________________________ 124

Regression line, fitted values and residuals __________________________ 127

Checking normality of residuals __________________________________ 128

Exercise______________________________________________________ 130

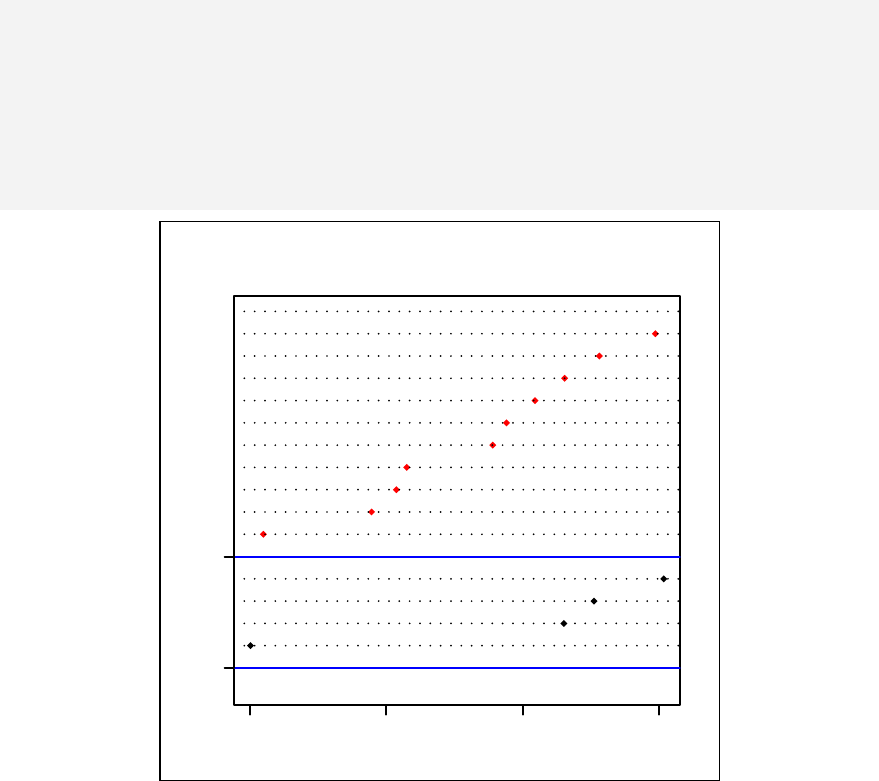

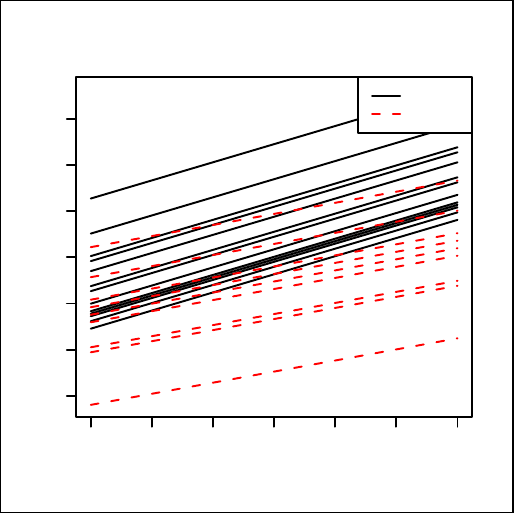

Chapter 12: Stratified linear regression_______________________________ 131

Exercise______________________________________________________ 138

Chapter 13: Curvilinear Relationship ________________________________ 139

Stratified curvilinear model ______________________________________ 144

Modelling with a categorical independent variable ____________________ 146

References ___________________________________________________ 148

Exercise______________________________________________________ 148

Chapter 14: Generalized Linear Models ______________________________ 149

Model attributes _______________________________________________ 150

Attributes of model summary_____________________________________ 151

Covariance matrix _____________________________________________ 152

References ___________________________________________________ 155

Exercise______________________________________________________ 156

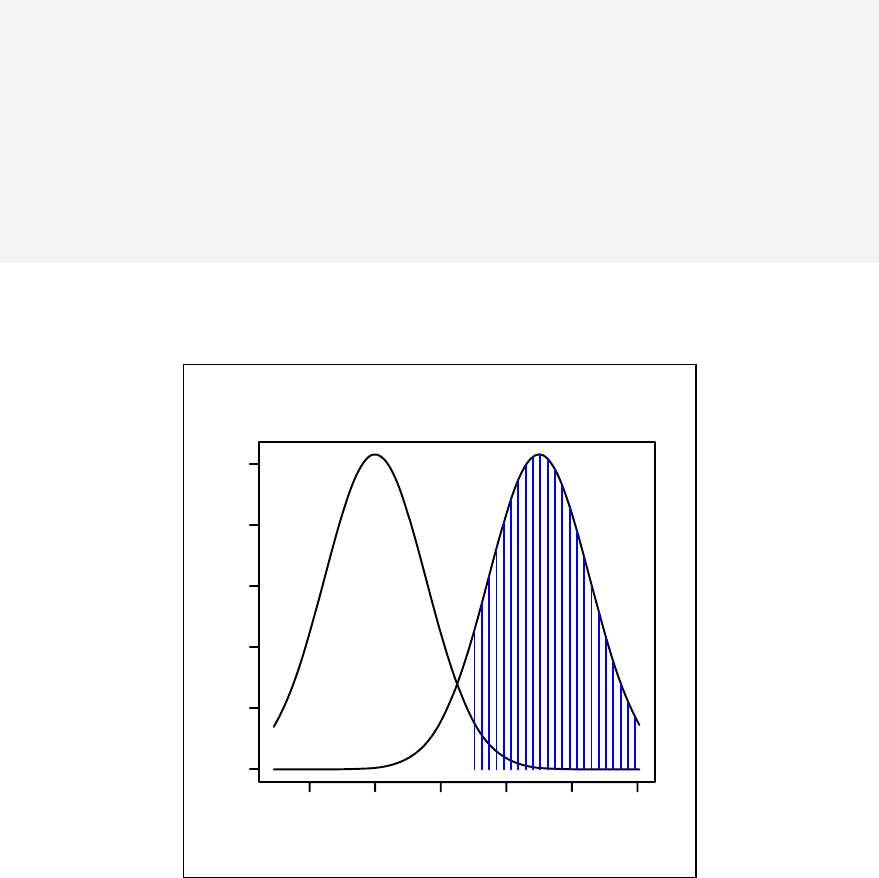

Chapter 15: Logistic Regression_____________________________________ 157

Distribution of binary outcome ___________________________________ 157

Logistic regression with a binary independent variable_________________ 161

Interaction ___________________________________________________ 166

Interpreting the odds ratio _______________________________________ 168

viii

References ___________________________________________________ 175

Exercises_____________________________________________________ 176

Chapter 16: Matched Case Control Study _____________________________ 177

1:n matching__________________________________________________ 179

Logistic regression for 1:1 matching _______________________________ 180

Conditional logistic regression____________________________________ 183

References ___________________________________________________ 184

Exercises_____________________________________________________ 184

Chapter 17: Polytomous Logistic Regression___________________________ 185

Polytomous logistic regression using R _____________________________ 187

Exercises_____________________________________________________ 192

Chapter 18: Ordinal Logistic Regression ______________________________ 193

Modelling ordinal outcomes______________________________________ 195

References ___________________________________________________ 196

Exercise______________________________________________________ 196

Chapter 19: Poisson and Negative Binomial Regression __________________ 197

Modelling with Poisson regression ________________________________ 201

Goodness of fit test_____________________________________________ 201

Incidence density ______________________________________________ 204

Negative binomial regression_____________________________________ 206

References ___________________________________________________ 209

Exercise______________________________________________________ 210

Chapter 20: Introduction to Multi-level Modelling ______________________ 211

Random intercepts model________________________________________ 215

Model with random slopes_______________________________________ 219

Exercises_____________________________________________________ 224

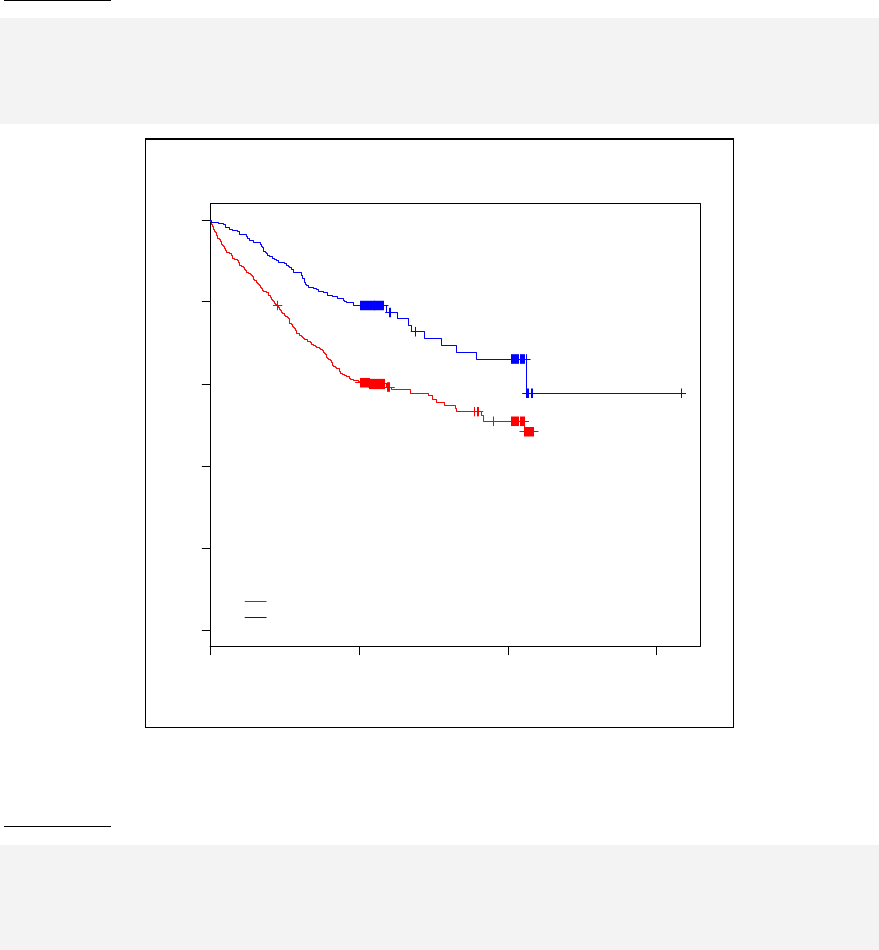

Chapter 21: Survival Analysis ______________________________________ 225

Survival object in R ____________________________________________ 228

ix

Life table ____________________________________________________ 229

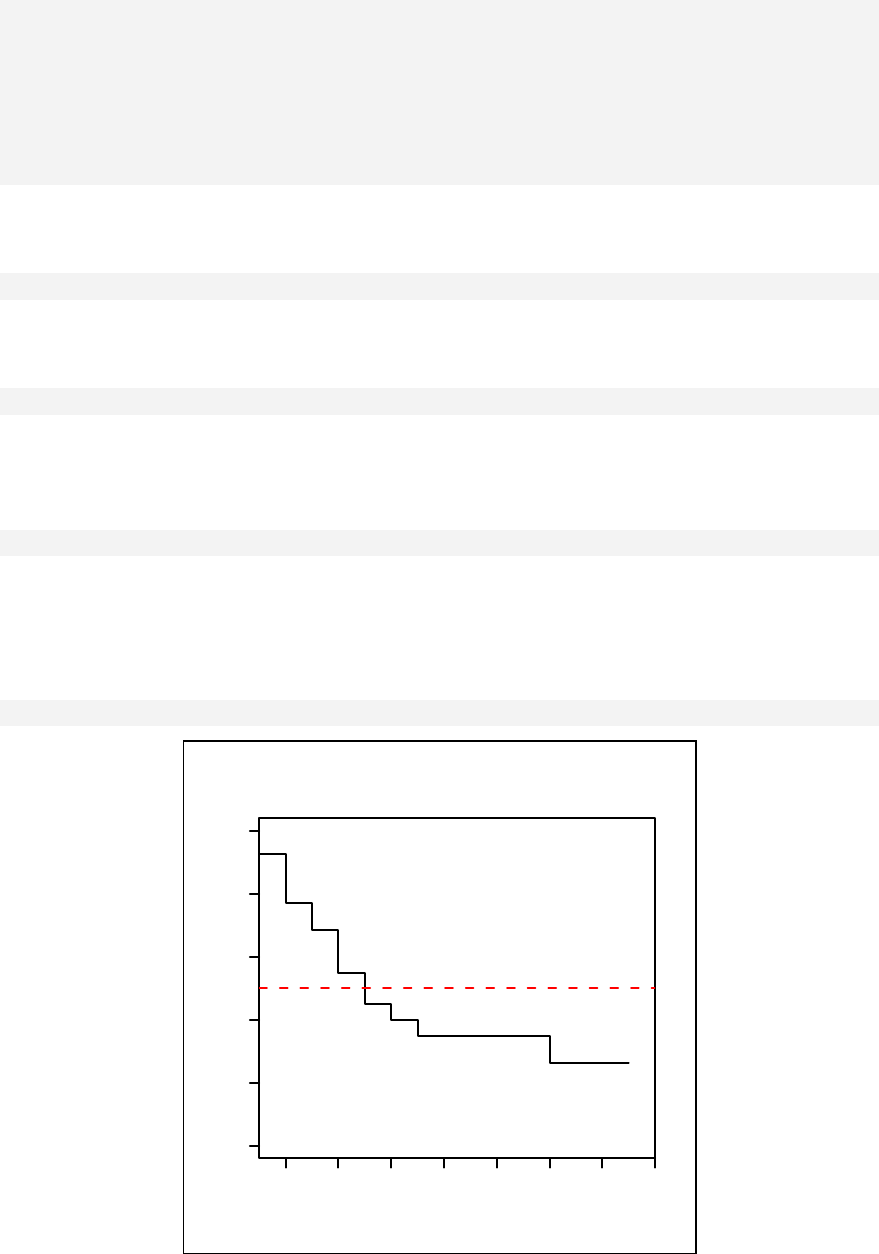

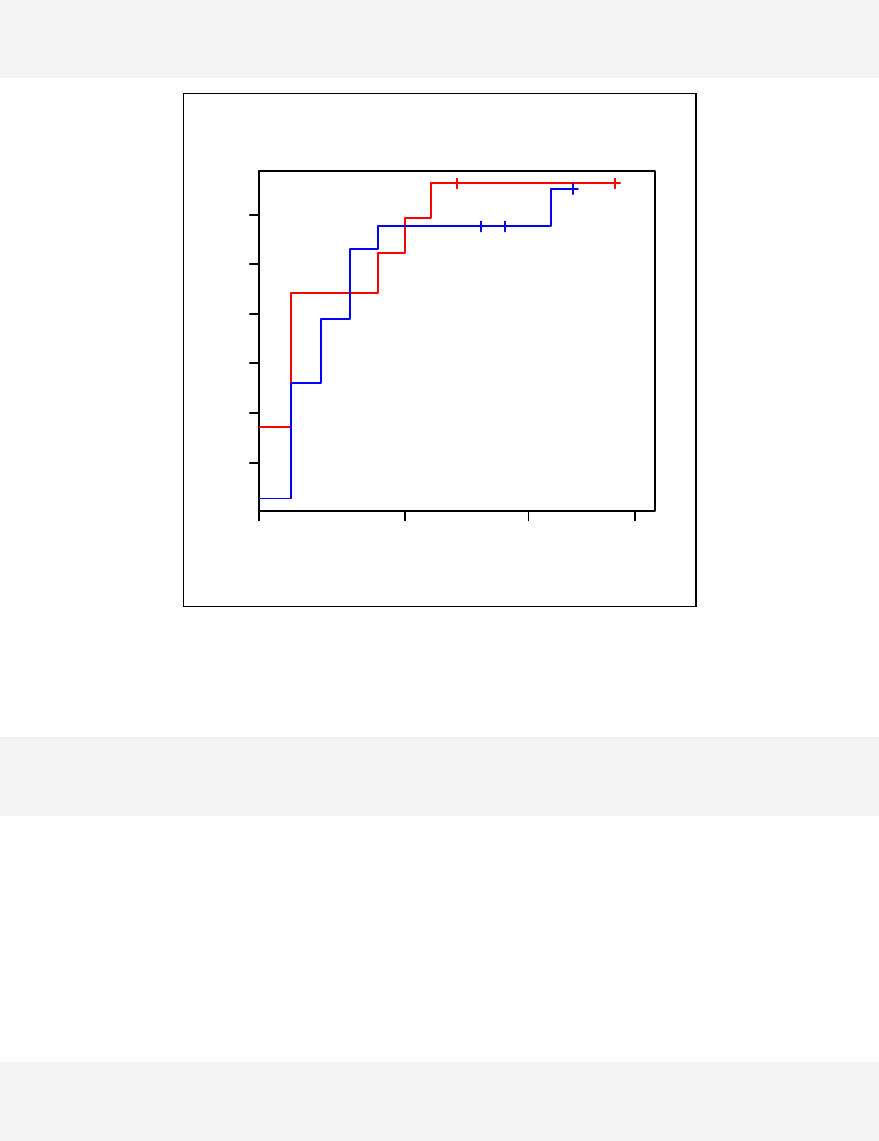

Kaplan-Meier curve ____________________________________________ 231

Cumulative hazard rate _________________________________________ 232

References ___________________________________________________ 235

Exercises_____________________________________________________ 236

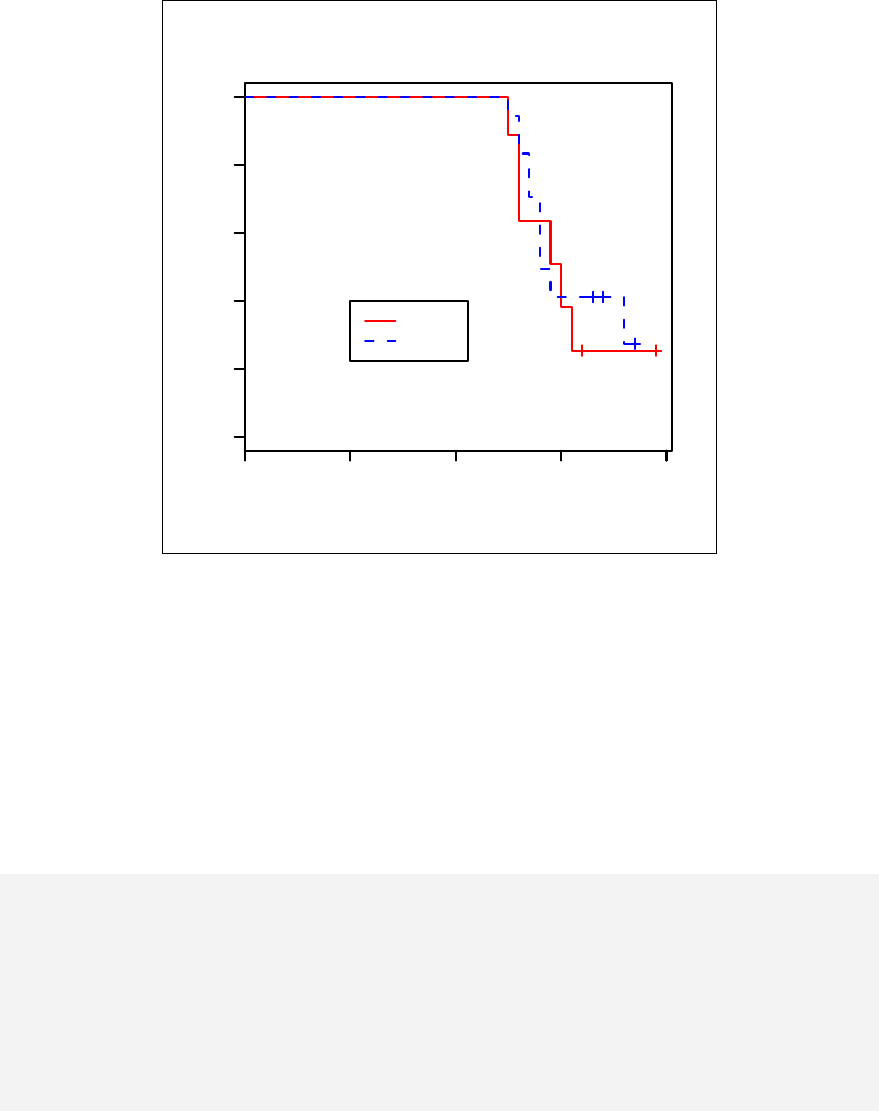

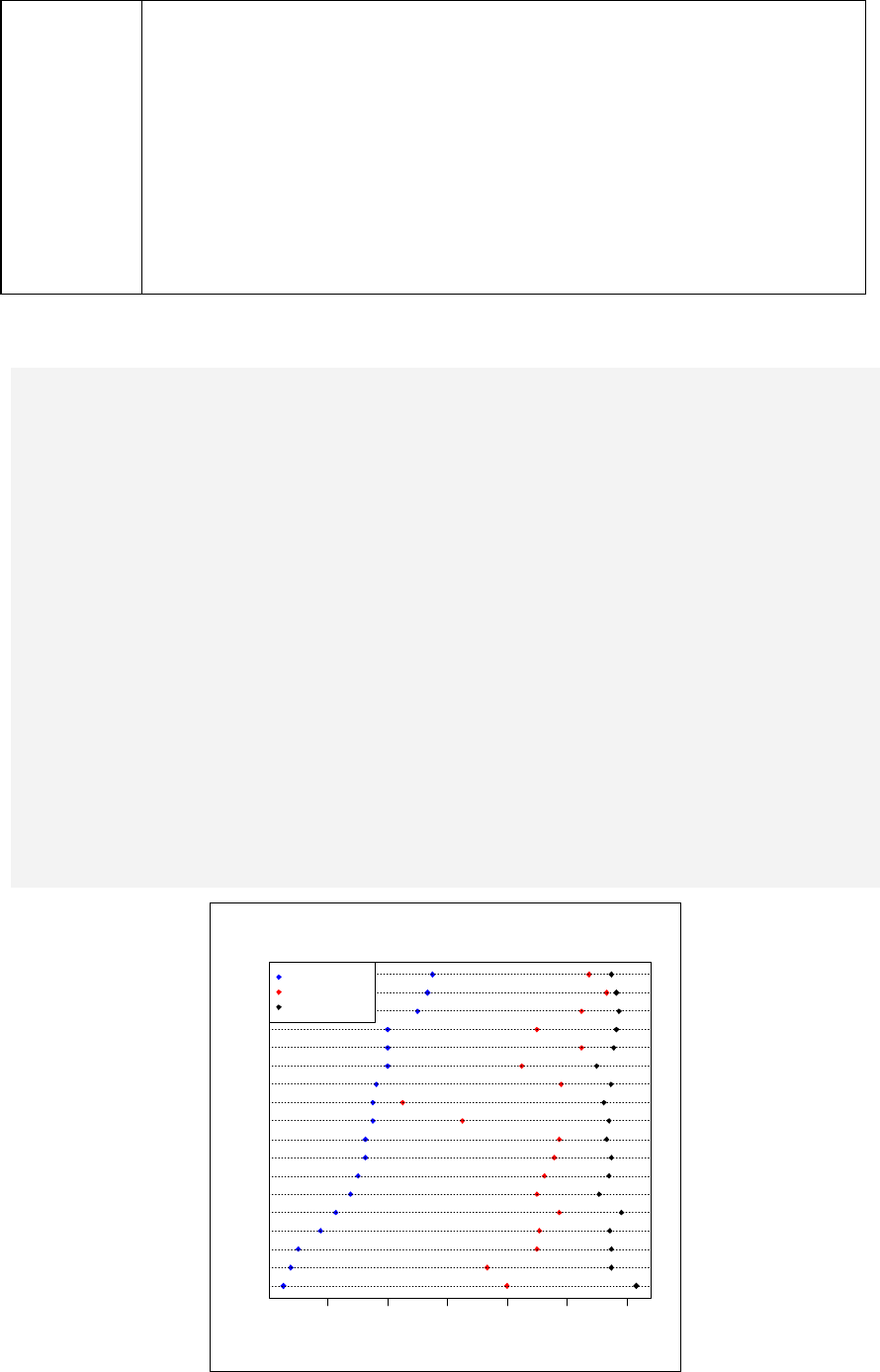

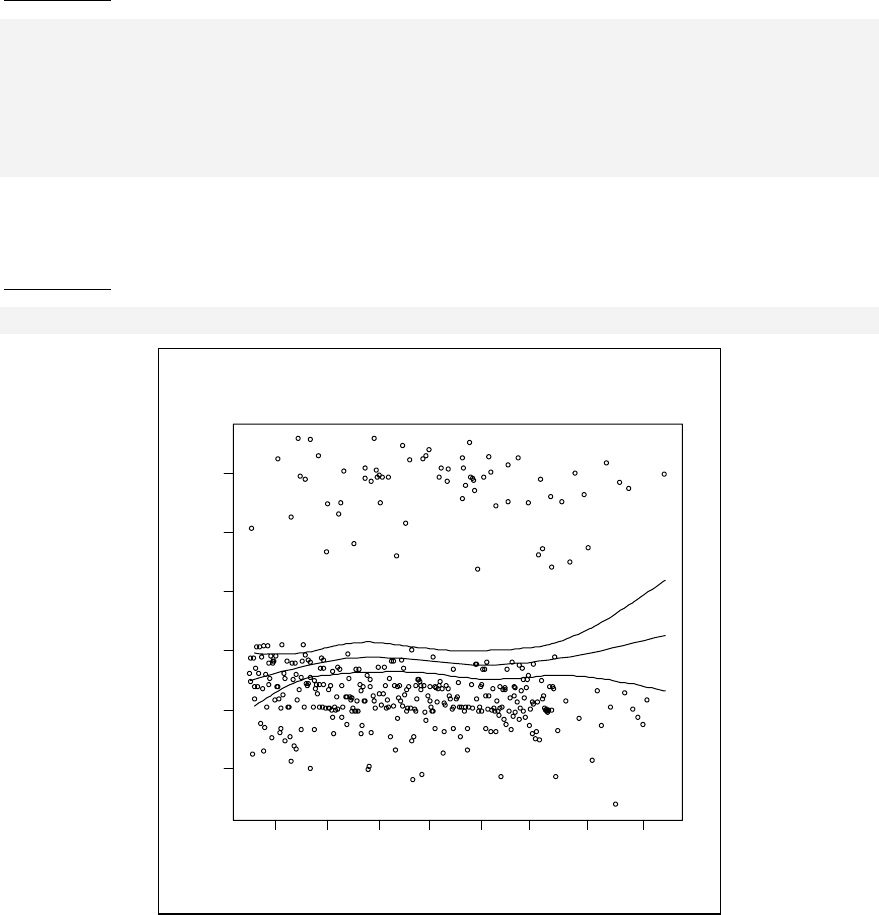

Chapter 22: Cox Regression________________________________________ 237

Testing the proportional hazards assumption_________________________ 238

Stratified Cox regression ________________________________________ 241

References ___________________________________________________ 243

Exercises_____________________________________________________ 244

Chapter 23 Analysing Attitudes Data _________________________________ 245

tableStack for logical variables and factors __________________________ 247

Cronbach's alpha ______________________________________________ 249

Summary ____________________________________________________ 253

References ___________________________________________________ 253

Exercise______________________________________________________ 254

Chapter 24: Sample size calculation__________________________________ 255

Field survey __________________________________________________ 255

Comparison of two proportions ___________________________________ 258

Comparison of two means _______________________________________ 263

Lot quality assurance sampling ___________________________________ 264

Power determination for comparison of two proportions________________ 266

Power for comparison of two means _______________________________ 267

Exercises_____________________________________________________ 268

Chapter 25: Documentation ________________________________________ 269

Crimson Editor________________________________________________ 270

Tinn-R ______________________________________________________ 271

Saving the output text___________________________________________ 274

x

Saving a graph ________________________________________________ 275

Chapter 26: Strategies of Handling Large Datasets _____________________ 277

Simulating a large dataset _______________________________________ 277

Chapter 27 Table Stacking for a Manuscript ___________________________ 281

Concept of 'tableStack'__________________________________________ 281

Colum of total ________________________________________________ 286

Exporting 'tableStack' and other tables into a manuscript _______________ 287

Solutions to Exercises _____________________________________________ 289

Index __________________________________________________________ 310

Epicalc Functions ________________________________________________ 312

Epicalc Datasets _________________________________________________ 313

Chapter 1: Starting to use R

This chapter concerns first use of R, covering installation, how to obtain help,

syntax of R commands and additional documentation. Note that this book was

written for Windows users, however R also works on other operating systems.

Installation

R is distributed under the terms of the GNU General Public License. It is freely

available for use and distribution under the terms of this license. The latest version

of R and Epicalc and their documentation can be downloaded from CRAN (the

Comprehensive R Archive Network).

The main web site is http://cran.r-project.org/ but there are mirrors all around the

world. Users should download the software from the nearest site. R runs on the

three common contemporary operating systems, Linux, MacOS X and Windows.

To install R, first go to the CRAN website and select your operating system from

the top of the screen. For Windows users click the Windows link and follow the

link to the base subdirectory. In this page you can download the setup file for

Windows, which at the time of publication of this book was R-2.6.1-win32.exe.

Click this link and click the "Save" button.

The set-up file for R is around 28Mb. To run the installation simply double-click

this file and follow the instructions. After installation, a shortcut icon of R should

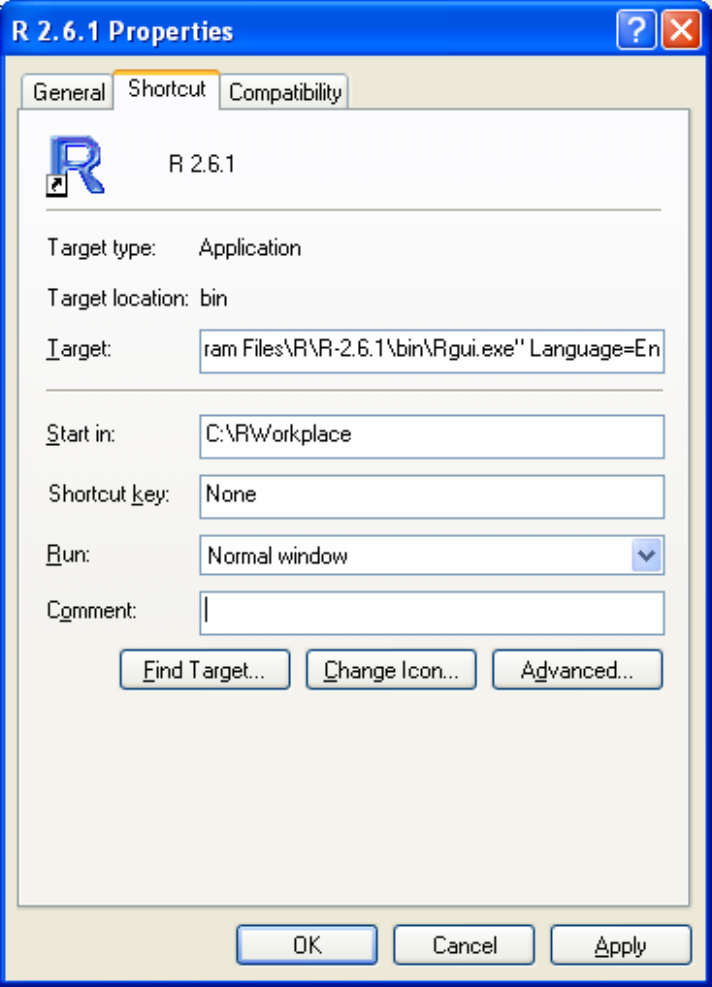

appear on the desktop. Right-click this R icon to change its start-up properties.

Replace the default 'Start in' folder with your own working folder. This is the folder

where you want R to work. Otherwise, the input and output of files will be done in

the program folder, which is not a good practice. You can create multiple shortcut

icons with different start-in folders for each project you are working on.

Suppose the work related to this book will be stored in a folder called

'C:\RWorkplace'. The 'Properties' of the icon should have the 'Start in:' text box

filled with 'C:\RWorkplace' (do not type the single quote signs ' and '. They are used

in this book to indicate objects or technical names).

R detects the main language of the operating system in the computer and tries to

use menus and dialog boxes in that language. For example, if you are running R on

a Windows XP in the Chinese language, the menus and dialog boxes will appear in

Chinese. Since this book is written in English, it is advised to set the language to be

2

English so that the responses on your computer will be the same as those in this

book. In the 'Shortcut' tab of the R icon properties, add Language=en at the end

of the 'Target'. Include a space before the word 'Language'.

So, the Target text box for R-2.6.1 version icon would be:

"C:\Program Files\R\R-2.6.1\bin\Rgui.exe" Language=en

To use this book efficiently, a specialised text editor such as Crimson Editor or

Tinn-R must be installed on your computer. In addition, the Epicalc package needs

to be installed and loaded.

3

Text Editors

Crimson Editor

This software can be installed in the conventional fashion as all other software, i.e.

by executing the setup.exe file and following the instructions.

Crimson Editor has some nice features that can assist the user when working with

R. It is very powerful for editing script or command files used by various software

programs, such as C++, PHP and HTML files. Line numbers can be shown and

open and closed brackets can be matched. These features are important because

they are commonly used in the R command language.

Installation and set-up of Crimson Editor is explained in Chapter 25.

Tinn-R

Tinn-R is probably the best text file editor to use in conjunction with the R

program. It is specifically designed for working with R script files. In addition to

syntax highlighting of R code, Tinn-R can interact with R using specific menus and

tool bars. This means that sections of commands can be highlighted and sent to the

R console (sourced) with a single button click. Tinn-R can be downloaded from the

Internet at: www.sciviews.org/Tinn-R.

Starting R Program

After modifying the start-up properties of the R icon, double-click the R icon on the

desktop. The program should then start and the following output is displayed on the

R console.

R version 2.6.1 (2007-11-26)

Copyright (C) 2007 The R Foundation for Statistical Computing

ISBN 3-900051-07-0

R is free software and comes with ABSOLUTELY NO WARRANTY.

You are welcome to redistribute it under certain conditions.

Type 'license()' or 'licence()' for distribution details.

Natural language support but running in an English locale

R is a collaborative project with many contributors.

Type 'contributors()' for more information and

'citation()' on how to cite R or R packages in publications.

Type 'demo()' for some demos, 'help()' for on-line help, or

'help.start()' for an HTML browser interface to help.

Type 'q()' to quit R.

>

4

The output shown above was produced from R version 2.6.1, released on

November 26, 2007. The second paragraph declares and briefly explains the

warranty and license. The third paragraph gives information about contributors and

how to cite R in publications. The fourth paragraph suggests a few commands for

first-time users to try.

In this book, R commands begin with the ">" sign, similar to what is shown at the

R console window. You should not type the ">". Just type the commands. Within

this document both the R commands and output lines will be in Courier New

font whereas the explanatory text are in Times New Roman. Epicalc commands are

shown in italic, whereas standard R commands are shown in normal font style.

The first thing to practice is to quit the program. Click the cross sign at the far right

upper corner of the program window or type the following at the R console:

> q()

A dialog box will appear asking "Save workspace image?" with three choices:

"Yes", "No" and "Cancel". Choose "Cancel" to continue working with R. If you

choose "Yes", two new files will be created in your working folder. Any previous

commands that have been typed at the R console will be saved into a file called

'.Rhistory' while the current workspace will be saved into a file called ".Rdata".

Notice that these two files have no prefix. In the next session of computing, when R

is started in this folder, the image of the working environment of the last saved R

session will be retrieved automatically, together with the command history.

Continued use of R in this fashion (quitting and saving the unnamed workspace

image) will result in these two files becoming larger and larger. Usually one would

like to start R afresh every time so it is advised to always choose "No" when

prompted to save the workspace. Alternatively you may type:

> q("no")

to quit without saving the workspace image and prevent the dialog box message

appearing.

Note that before quitting R you can save your workspace image by typing

> save.image("C:/RWorkplace/myFile.RData")

where 'myFile' is the name of your file. Then when you quit R you should answer

"No" to the question.

R libraries & packages

R can be defined as an environment within which many classical and modern

statistical techniques, called functions, are implemented. A few of these techniques

are built into the base R environment, but many are supplied as packages. A

package is simply a collection of these functions together with datasets and

documentation. A library is a collection of packages typically contained in a single

directory on the computer.

5

There are about 25 packages supplied with R (called “standard” or “recommended”

packages) and many more are available through the CRAN web site. Only 7 of

these packages are loaded into memory when R is executed. To see which packages

are currently loaded into memory you can type:

> search()

[1] ".GlobalEnv" "package:methods" "package:stats"

[4] "package:graphics" "package:grDevices" "package:utils"

[7] "package:datasets" "Autoloads" "package:base"

The list shown above is in the search path of R. When R is told to do any work, it

will look for a particular object for it to work with from the search path. First, it will

look inside '.GlobalEnv', which is the global environment. This will always be the

first search position. If R cannot find what it wants here, it then looks in the second

search position, in this case "package:methods", and so forth. Any function that

belongs to one of the loaded packages is always available during an R session.

Epicalc package

The Epicalc package can be downloaded from the web site http://cran.r-project.org.

On the left pane of this web page, click 'Packages'. Move down along the

alphabetical order of various packages to find 'epicalc'. The short and humble

description is 'Epidmiological calculator'. Click 'epicalc' to hyperlink to the

download page. On this page you can download the Epicalc source code(.tar.gz),

and the two binary versions for MacIntosh (.tgz) and Windows (.zip) versions,

along with the documentation (.pdf).

The Epicalc package is updated from time to time. The version number is in the

suffix. For example, epicalc_2.6.1.6.zip is the binary file for use on the Windows

operating system and the version of Epicalc is 2.6.1.6. A newer version is created to

have bugs (errors in the programme) fixed, to improve the features of existing

functions (commands) and to include new functions.

The file epicalc_version.zip ('version' increases with time) is a

compressed file containing the fully compiled Epicalc package. Installation of this

package must be done within R itself. Usually there is only one session of

installation needed unless you want to overwrite the old package with a newer one

of the same name. You will also need to reinstall this package if you install a new

version of R. To install Epicalc, click 'Packages' on the menu bar at the top of the

window. Choose 'Install packages from local zip files...". When the navigating

window appears, browse to find the file and open it.

6

Successful installation will result in:

> utils:::menuInstallLocal()

package 'epicalc' successfully unpacked and MD5 sums checked

updating HTML package descriptions

Installation is now complete; however functions within Epicalc are still not

available until the following command has been executed:

> library(epicalc)

Note the use of lowercase letters. When the console accepts the command quietly,

we can be reasonably confident that the command has been accepted. Otherwise,

errors or warnings will be reported.

A common warning is a report of a conflict. This warning is, most of the time, not

very serious. This just means that an object (usually a function) with the same name

already exists in the working environment. In this case, R will give priority to the

object that was loaded more recently. The command library(epicalc) must

be typed everytime a new session of R is run.

Updating packages

Whenever a new version of a package is released it is advised to keep up to date by

removing (unloading) the old one and loading the new one. To unload the Epicalc

package, you may type the following at the R console:

> detach(package:epicalc)

After typing the above command, you may then install the new version of the

package as mentioned in the previous section. If there are any problems, you may

need to quit R and start afresh.

RProfile.site

Whenever R is run it will execute the commands in the "RProfile.site" file, which

is located in the C:\Program Files\R\R-2.6.1\etc folder. Remember to replace the R

version with the one you have installed. By including the command

library(epicalc) in the RProfile.site file, every time R is run, the Epicalc

package will be automatically loaded and ready for use. You may edit this file and

insert the command above.

Your Rprofile.site file should look something like this:

library(epicalc)

# Things you might want to change

# options(papersize="a4")

# options(editor="notepad")

# options(pager="internal")

7

# to prefer Compiled HTML help

# options(chmhelp=TRUE)

# to prefer HTML help

# options(htmlhelp=TRUE)

On-line help

On-line help is very useful when using software, especially for first time users.

Self-studying is also possible from the on-line help of R, although with some

difficulty. This is particularly true for non-native speakers of English, where

manuals can often be too technical or wordy. It is advised to combine the use of this

book as a tutorial and on-line help as a reference manual.

On-line help documentation comes in three different versions in R. The default

version is to show help information in a separate window within R. This format is

written in a simple markup language that can be read by R and can be converted to

LaTeX, which is used to produce the printed manuals. The other versions, which

can be set in the "Rprofile.site" file mentioned previously, are HTML

(htmlhelp=TRUE) and compiled HTML (chmhelp=TRUE). The later version is

Windows specific and if chosen, help documentation will appear in a Windows help

viewer. Each help format has its own advantages and you are free to choose the

format you want.

For self study, type

> help.start()

The system will open your web browser from the main menu of R. 'An Introduction

to R' is the section that all R users should try to read first. Another interesting

section is 'Packages'. Click this to see what packages you have available. If the

Epicalc package has been loaded properly, then this name should also appear in the

list. Click 'Epicalc' to see the list of the functions available. Click each of the

functions one by one and you will see the help for that individual function. This

information can also be obtained by typing 'help(myFun)' at the R console, where

'myFun' is the name of the function. To get help on the 'help' function you can type

> help(help)

or perhaps more conveniently

> ?help

For fuzzy searching you can try

> help.search("...")

Replace the dots with the keyword you want to search for. This function also allows

you to search on multiple keywords. You can use this to refine a query when you

get too many responses.

8

Very often the user would want to know how to get other statistical analysis

functions that are not available in a currently installed package. A better option

would be to search from the CRAN website using the 'search' feature located on the

left side of the web page and Google will do a search within CRAN. The results

would be quite extensive and useful. The user then can choose the website to go to

for further learning.

Now type

> search()

You should see "package:epicalc" in the list. If the Epicalc package has not been

loaded, then the functions contained inside will not be available for use.

Having the Epicalc package in the search path means we can use all commands or

functions in that package. Other packages can be called when appropriate. For

example, the package survival is necessary for survival analysis. We will encounter

this in the corresponding section.

The order of the search path is sometimes important. For Epicalc users, it is

recommended that any additional library should be called early in the session of R,

i.e. before reading in and attaching to a data frame. This is to make sure that the

active dataset will be in the second search position. More details on this will be

discussed in Chapter 4.

Using R

A basic but useful purpose of R is to perform simple calculations.

> 1+1

[1] 2

When you type '1+1' and hit the <Enter> key, R will show the result of the

calculation, which is equal to 2.

For the square root of 25:

> sqrt(25)

[1] 5

The wording in front of the left round bracket is called a 'function'. The entity inside

the bracket is referred to as the function's 'argument'. Thus in the last example,

'sqrt()' is a function, and when imposed on 25, the result is 5.

To find the value of e:

> exp(1)

[1] 2.718282

9

Exponentiation of 1 results in the value of e, which is about 2.7. Similarly, the

exponential value of -5 or e-5 would be

> exp(-5)

[1] 0.006738

Syntax of R commands

R will compute only when the commands are syntactically correct. For example, if

the number of closed brackets is fewer than the number of opened ones and the

<Enter> key is pressed, the new line will start with a '+' sign, indicating that R is

waiting for completion of the command. After the number of closed brackets equals

the number of opened ones, computation is carried out and the result appears.

> log(3.8

+ )

[1] 1.335001

However, if the number of closed brackets exceeds the number of opened ones, the

result is a syntax error, or computer grammatical.

> log(3.2))

Error: syntax error

R objects

In the above simple calculation, the results are immediately shown on the screen

and are not stored. To perform a calculation and store the result in an object type:

> a = 3 + 5

We can check whether the assignment was successful by typing the name of the

newly created object:

> a

[1] 8

More commonly, the assignment is written in the following way.

> a <- 3 + 5

> a

[1] 8

For ordinary users, there is no obvious difference between the use of = and <-. The

difference applies at the R programming level and will not be discussed here.

Although <- is slightly more awkward to type than =, the former technique is

recommended to avoid any confusion with the comparison operator (==). Notice

that there is no space between the two components of the assignment operator <-.

Now create a second object called 'b' equal to the square root of 36.

> b <- sqrt(36)

10

Then, add the two objects together.

> a + b

[1] 14

We can also compute the value on the left and assign the result to a new object

called 'c' on the right, using the right assign operator, ->.

> a + 3*b -> c

> c

[1] 26

However, the following command does not work.

> a + 3b -> c

Error: syntax error

R does not recognise '3b'. The * symbol is needed, which indicates multiplication.

The name of an object can consist of more than one letter.

> xyx <- 1

> xyx

[1] 1

A nonsense thing can be typed into the R console such as:

> qwert

Error: Object "qwert" not found

What is typed in is syntactically correct. The problem is that 'qwert' is not a

recognizable function nor a defined object.

A dot can also be used as a delimiter for an object name.

> baht.per.dollar <- 40

> baht.per.dollar

[1] 40

In conclusion, when one types in anything at the R console, the program will try to

show the value of that object. If the signs = or <- or -> are encountered, the value

will be stored to the object on the left of = and <- or the right hand side of ->.

Character or string objects

Character or string means alphanumeric or letter. Examples include the name of a

person or an address. Objects of this type cannot be used for calculation. Telephone

numbers and post-codes are also strings.

11

> A <- "Prince of Songkla University"

> A

[1] "Prince of Songkla University"

R is case sensitive, so 'A' is not the same as 'a'.

> a

[1] 8

> A

[1] "Prince of Songkla University"

Putting comments in a command line

In this book, as with most other programming documents, the author usually inserts

some comments as a part of documentation to remind him/herself or to show some

specific issue to the readers.

R ignores any words following the # symbol. Thus, such a sentence can be used for

comments. Examples:

> 3*3 = 3^2 # This gives a syntax error

> 3*3 == 3^2 # This is correct syntax-wise.

> 3*2 == 3^2 # Correct syntax but the result is FALSE

Logical: TRUE and FALSE

In the last few commands:

> 3*3 == 3^2

[1] TRUE

But

> 3*2 == 3^2

[1] FALSE

Note that we need two equals signs to check equality but only one for assignment.

> 3*2 < 3^2

[1] TRUE

Logical connection using & (logical 'and')

Both TRUE and FALSE are logical objects. They are both in upper case.

Connection of more than one such object results in either TRUE or FALSE. If all

are TRUE, the final result is TRUE. For example:

> TRUE & TRUE

[1] TRUE

12

A combination of FALSE with any other logical is always FALSE.

> TRUE & FALSE

[1] FALSE

> FALSE & FALSE

[1] FALSE

Note that

> (FALSE & TRUE) == (TRUE & FALSE)

[1] TRUE

Without brackets, computation is carried out from left to right.

> FALSE & TRUE == TRUE & FALSE

[1] FALSE

Logical connection with | (logical 'or')

This kind of connection looks for anything which is TRUE.

> TRUE | TRUE

[1] TRUE

> TRUE | FALSE

[1] TRUE

> 3*3 == 3^2 | 3*2 == 3^2

[1] TRUE

Value of TRUE and FALSE

Numerically, TRUE is equal to 1 and FALSE is equal to 0.

> TRUE == 1

[1] TRUE

> FALSE == 0

[1] TRUE

> (3*3 == 3^2) + (9 > 8)

[1] 2

Each of the values in the brackets is TRUE, which is equal to 1. The addition of two

TRUE objects results in a value of 2. However,

> 3*3 == 3^2 + 9 > 8

Error: syntax error in "3*3 == 3^2 + 9 >"

This is due to the complicated sequence of the operation. Therefore, it is always

better to use brackets in order to specify the exact sequence of computation.

Let's leave R for the time being. Answer "Yes" to the question: "Save work space

image?".

13

Please remember that answering "No" is the preferred response in this book as we

recommend typing

> q("no")

to end each R session. Responding "Yes" here is just an exercise in understanding

the concept of workspace images, which follows in chapter 2.

References

An Introduction to R. ISBN 3-900051-12-7.

R Language Definition. ISBN 3-900051-13-5.

Both references above can be downloaded from the CRAN web site.

14

Exercises

Problem 1.

The formula for sample size of a descriptive survey is

()

π=n −1

1.96

2

2

π

δ

where n is the sample size,

π is the prevalence in the population (not to be confused with the constant pi), and

δ is half the width of the 95% confidence interval (precision).

Compute the required sample size if the prevalence is estimated to be 30% of the

population and the 95% confidence interval is not farther from the estimated

prevalence by more than 5%.

Problem 2.

Change the above prevalence to 5% and suppose each side of the 95% confidence

interval is not farther from the estimated prevalence by more than 2%.

Problem 3.

The term 'logit' denotes 'log{P/(1-P)}' where P is the risk or prevalence of a disease.

Compute the logits from the following prevalences: 1%, 10%, 50%, 90% and

100%.

15

Chapter 2: Vectors

In the previous chapter, we introduced simple calculations and storage of the results

of the calculations. In this chapter, we will learn slightly more complicated issues.

History and saved objects

Outside R, examine the working folder; you should see two new files: ".Rdata",

which is the working environment saved from the latest R session, and

".Rhistory", which recorded all the commands in the preceding R session.

".Rdata" is a binary file and only recognised by the R program, while ".Rhistory"

is a text file and can be edited using any text editor such as Notepad, Crimson

Editor or Tinn-R.

Open R from the desktop icon. You may see this in the last line:

[Previously saved workspace restored]

This means that R has restored commands from the previous R session (or history)

and the objects stored form this session. Press the up arrow key and you will see the

previous commands (both correct and incorrect ones). Press <Enter> following the

command; the results will come up as if you continued to work in the previous

session.

> a

[1] 8

> A

[1] "Prince of Songkla University"

Both 'a' and 'A' are retained from the previous session.

Note: ______________________________________________________________________

The image saved from the previous session contains only objects in the '.GlobalEnv', which is

the first position in the search path. The whole search path is not saved. For example, any

libraries manually loaded in the previous session need to be reloaded. However, the Epicalc

library is automatically loaded every time we start R (from the setting of the "Rprofile.site"

file that we modified in the previous chapter). Therefore, under this setting, regardless of

whether the workspace image has been saved in the previous session or not, Epicalc will

always be in the search path.

16

If you want to remove the objects in the environment and the history, quit R

without saving. Go to the 'start in' folder and delete the two files ".Rhistory" and

".Rdata". Then restart R. There should be no message indicating restoration of

previously saved workspace and no history of previous commands.

Concatenation

Objects of the same type, i.e. numeric with numeric, string with string, can be

concatenated. In fact, a vector is an object containing concatenated, atomised (no

more divisible) objects of the same type.

To concatenate, the function 'c()' is used with at least one atomised object as its

argument. Create a simple vector having the integers 1, 2 and 3 as its elements.

> c(1,2,3)

[1] 1 2 3

This vector has three elements: 1, 2 and 3. Press the up arrow key to reshow this

command and type a right arrow to assign the result to a new object called 'd'. Then

have a look at this object.

> c(1,2,3) -> d

> d

Do some calculation with 'd' and observe the results.

> d + 4

> d - 3

> d * 7

> d / 10

> d * d

> d ^ 2

> d / d

> d == d

In addition to numbers, words can be used to create string vectors.

> B <- c("Faculty of Medicine","Prince of Songkla University")

> B

[1] "Faculty of Medicine" "Prince of Songkla University"

Vectors of systematic numbers

Sometimes a user may want to create a vector of numbers with a certain pattern.

The following command will create a vector of integers from 1 to 10.

> x <- 1:10; x

[1] 1 2 3 4 5 6 7 8 9 10

17

For 5 repetitions of 13:

> rep(13, times=5)

[1] 13 13 13 13 13

The function 'rep' is used to replicate values of the argument. For sequential

numbers from -1 to 11 with an incremental step of 3 type:

> seq(from = -1, to = 11, by = 3)

[1] -1 2 5 8 11

In this case 'seq' is a function with three arguments 'from', 'to' and 'by'. The

function can be executed with at least two parameters, 'from' and 'to', since the 'by'

parameter has a default value of 1 (or -1 if 'to' is less than 'from').

> seq(10, 23)

[1] 10 11 12 13 14 15 16 17 18 19 20 21 22 23

> seq(10, -3)

[1] 10 9 8 7 6 5 4 3 2 1 0 -1 -2 -3

The order of the arguments 'from', 'to' and 'by' is assumed if the words are omitted.

When explicitly given, the order can be changed.

> seq(by=-1, to=-3, from=10)

This rule of argument order and omission applies to all functions. For more details

on 'seq' use the help feature.

Subsetting a vector with an index vector

In many instances, only a certain part of a vector needs to be used. Let's assume we

have a vector of running numbers from 3 to 100 in steps of 7. What would be the

value of the 5th number?

> seq(from=3, to=100, by=7) -> x

> x

[1] 3 10 17 24 31 38 45 52 59 66 73 80 87 94

In fact, the vector does not end with 100, but rather 94, since a further step would

result in a number that exceeds 100.

> x[5]

[1] 31

The number inside the square brackets '[]' is called a subscript. It denotes the

position or selection of the main vector. In this case, the value in the 5th position of

the vector 'x' is 31. If the 4th, 6th and 7th positions are required, then type:

> x[c(4,6,7)]

[1] 24 38 45

18

Note that in this example, the object within the subscript can be a vector, thus the

concatenate function c is needed, to comply with the R syntax. The following

would not be acceptable:

> x[4,6,7]

Error in x[4, 6, 7] : incorrect number of dimensions

To select 'x' with the first four elements omitted, type:

> x[-(1:4)]

[1] 31 38 45 52 59 66 73 80 87 94

A minus sign in front of the subscript vector denotes removal of the elements of 'x'

that correspond to those positions specified by the subscript vector.

Similarly, a string vector can be subscripted.

> B[2]

[1] "Prince of Songkla University"

Using a subscript vector to select a subset

A vector is a set of numbers or strings. Application of a condition within the

subscript results in a subset of the main vector. For example, to choose only even

numbers of the vector 'x' type:

> x[x/2 == trunc(x/2)]

[1] 10 24 38 52 66 80 94

The function trunc means to truncate or remove the decimals. The condition that

'x' divided by 2 is equal to its truncated value is true iff (if and only if) 'x' is an even

number. The same result can be obtained by using the 'subset' function.

> subset(x, x/2==trunc(x/2))

If only odd numbers are to be chosen, then the comparison operator can simply be

changed to !=, which means 'not equal'.

> subset(x, x/2!=trunc(x/2))

[1] 3 17 31 45 59 73 87

The operator ! prefixing an equals sign means 'not equal', thus all the chosen

numbers are odd. Similarly, to choose the elements of 'x' which are greater than 30

type:

> x[x>30]

[1] 31 38 45 52 59 66 73 80 87 94

19

Functions related to manipulation of vectors

R can compute statistics of vectors very easily.

> fruits <- c(5, 10, 1, 20)

> summary(fruits)

Min. 1st Qu. Median Mean 3rd Qu. Max.

1.0 4.0 7.5 9.0 12.5 20.0

> sum(fruits)

[1] 36

There are 36 fruits in total.

> length(fruits) # number of different types of fruits

[1] 4

> mean(fruits) # mean of number of fruits

[1] 9

> sd(fruits) # standard deviation

[1] 8.205689

> var(fruits) # variance

[1] 67.33333

Non-numeric vectors

Let's create a string vector called 'person' containing 11 elements.

> person <- c("A","B","C","D","E","F","G","H","I","J","K")

Alternatively, and more economically:

> person <- LETTERS[1:11]

Now check the class of the 'person' and 'fruits' objects.

> class(person)

[1] "character"

> class(fruits)

[1] "numeric"

Character types are used for storing names of individuals. To store the sex of the

person, initially numeric codes are given: 1 for male, 2 for female, say.

> sex <- c(1,2,1,1,1,1,1,1,1,1,2)

> class(sex)

[1] "numeric"

> sex1 <- as.factor(sex) # Creating sex1 from sex

The function as.factor coerces the object 'sex' into a factor, which is a

categorical data type in R.

> sex1

[1] 1 2 1 1 1 1 1 1 1 1 2

Levels: 1 2

20

There are two levels of sex.

> class(sex1)

[1] "factor"

> is.factor(sex)

[1] FALSE

> is.factor(sex1)

[1] TRUE

Now try to label 'sex1'.

> levels(sex1) <- c("male", "female")

The levels of 'sex' is a string vector used for labeling it.

> sex1

[1] male female male male male male male

[8] male male male female

Levels: male female

Ordering elements of a vector

Create an 11-element vector of ages.

> age <- c(10,23,48,56,15,25,40,21,60,59,80)

To sort the ages:

> sort(age)

[1] 10 15 21 23 25 40 48 56 59 60 80

The function sort creates a vector with the elements in ascending order. However,

the original vector is not changed.

> median(age)

[1] 40

The median of the ages is 40. To get other quantiles, the function quantile can

be used.

> quantile(age)

0% 25% 50% 75% 100%

10.0 22.0 40.0 57.5 80.0

By default (if other arguments omitted), the 0th, 25th, 50th, 75th and 100th percentiles

are displayed. To obtain the 30th percentile of age, type:

> quantile(age, prob = .3)

30%

23

21

Creating a factor from an existing vector

An age group vector can be created from age using the cut function.

> agegr <- cut(age, breaks=c(0,15,60,100))

This creates 3 distinct groups, which we can call 'children', 'adults' and 'elderly'.

Note that the minimum and maximum of the arguments in cut are the outer most

boundaries.

> is.factor(agegr)

[1] TRUE

> attributes(agegr)

$levels

[1] "(0,15]" "(15,60]" "(60,100]"

$class

[1] "factor"

The object 'agegr' is a factor, with levels shown above. We can check the

correspondence of 'age' and 'agegr' using the data.frame function, which

combines (but not saves) the 2 variables in a data frame and displays the result.

More details on this function is given the chapter 4.

> data.frame(age, agegr)

age agegr

1 10 (0,15]

2 23 (15,60]

3 48 (15,60]

4 56 (15,60]

5 15 (0,15]

6 25 (15,60]

7 40 (15,60]

8 21 (15,60]

9 60 (15,60]

10 59 (15,60]

11 80 (60,100]

Note that the 5th person, who is 15 years old, is classified into the first group and the

9th person, who is 60 years old, is in the second group. The label of each group uses

a square bracket to end the bin indicating that the last number is included in the

group (inclusive cutting). A round bracket in front of the group is exclusive or not

including that value. To obtain a frequency table of the age groups, type:

> table(agegr)

agegr

(0,15] (15,60] (60,100]

2 8 1

There are two children, eight adults and one elderly person.

> summary(agegr) # same result as the preceding command

> class(agegr)

[1] "factor"

22

The age group vector is a factor or categorical vector. It can be transformed into a

simple numeric vector using the 'unclass' function, which is explained in more

detail in chapter 3.

> agegr1 <- unclass(agegr)

> summary(agegr1)

Min. 1st Qu. Median Mean 3rd Qu. Max.

1.000 2.000 2.000 1.909 2.000 3.000

> class(agegr1)

[1] "integer"

Categorical variables, for example sex, race and religion should always be factored.

Age group in this example is a factor although it has an ordered pattern. Declaring a

vector as a factor is very important, particularly when performing regression

analysis, which will be discussed in future chapters.

The unclassed value of a factor is used when the numeric (or integer) values of the

factor are required. For example, if we are have a dataset containing a 'sex' variable,

classed as a factor, and we want to draw a scatter plot in which the colours of the

dots are to be classified by the different levels of 'sex', the colour argument to the

plot function would be 'col = unclass(sex)'. This will be demonstrated in future

chapters.

Missing values

Missing values usually arise from data not being collected. For example, missing

age may be due to a person not giving his or her age. In R, missing values are

denoted by 'NA', abbreviated from 'Not Available'. Any calculation involving NA

will result in NA.

> b <- NA

> b * 3

[1] NA

> c <- 3 + b

> c

[1] NA

As an example of a missing value of a person in a vector series, type the following

commands:

> height <- c(100,150,NA,160)

> height

[1] 100 150 NA 160

> weight <- c(33, 45, 60,55)

> weight

[1] 33 45 60 55

23

Among four subjects in this sample, all weights are available but one height is

missing.

> mean(weight)

[1] 48.25

> mean(height)

[1] NA

We can get the mean weight but not the mean height, although the length of this

vector is available.

> length(height)

[1] 4

In order to get the mean of all available elements, the NA elements should be

removed.

> mean(height, na.rm=TRUE)

[1] 136.6667

The term 'na.rm' means 'not available (value) removed', and is the same as when it

is omitted by using the function na.omit().

> length(na.omit(height))

[1] 3

> mean(na.omit(height))

[1] 136.6667

Thus na.omit() is an independent function that omits missing values from the

argument object. 'na.rm = TRUE' is an internal argument of descriptive statistics

for a vector.

24

Exercises

Problem 1.

Compute the value of 12 + 22 + 32 ... + 1002

Problem 2.

Let 'y' be a series of integers running from 1 to 1,000. Compute the sum of the

elements of 'y' which are multiples of 7.

Problem 3.

The heights (in cm) and weights (in kg) of 10 family members are shown below:

ht wt

Niece 120 22

Son 172 52

GrandPa 163 71

Daughter 158 51

Yai 153 51

GrandMa 148 60

Aunty 160 50

Uncle 170 67

Mom 155 53

Dad 167 64

Create a vector called 'ht' corresponding to the heights of the 11 family members.

Assign the names of the family members to the 'names' attribute of this vector.

Create a vector called 'wt' corresponding to the family member's weights.

Compute the body mass index (BMI) of each person where BMI = weight / height2.

Identify the persons who have the lowest and highest BMI and calculate the

standard deviation of the BMI.

25

Chapter 3: Arrays, Matrices and Tables

Real data for analysis rarely comes as a vector. In most cases, they come as a

dataset containing many rows or records and many columns or variables. In R,

these datasets are called data frames. Before delving into data frames, let us go

through something simpler such as arrays, matrices and tables. Gaining concepts

and skills in handing these types of objects will empower the user to manipulate the

data very effectively and efficiently in the future.

Arrays

An array may generally mean something finely arranged. In mathematics and

computing, an array consists of values arranged in rows and columns. A dataset is

basically an array. Most statistical packages can handle only one dataset or array at

a time. R has a special ability to handle several arrays and datasets simultaneously.

This is because R is an object-oriented program. Moreover, R interprets rows and

columns in a very similar manner.

Folding a vector into an array

Usually a vector has no dimension.

> a <- (1:10)

> a

[1] 1 2 3 4 5 6 7 8 9 10

> dim(a)

NULL

Folding a vector to make an array is simple. Just declare or re-dimension the

number of rows and columns as follows:

> dim(a) <- c(2,5)

> a

[,1] [,2] [,3] [,4] [,5]

[1,] 1 3 5 7 9

[2,] 2 4 6 8 10

26

The numbers in the square brackets are the row and column subscripts. The

command 'dim(a) <- c(2,5)' folds the vector into an array consisting of 2 rows

and 5 columns.

Extracting cells, columns, rows and subarrays using subscripts

While extracting a subset of a vector requires only one component number (or

vector), an array requires two components. Individual elements of an array may be

referenced by giving the name of the array followed by two subscripts separated by

commas inside the square brackets. The first subscript defines row selection; the

second subscript defines column selection. Specific rows and columns may be

extracted by omitting one of the components, but keeping the comma.

> a[1,] # for the first row and all columns of array 'a'

> a[,3] # for all rows of the third column

> a[2,4] # extract 1 cell from the 2nd row and 4th column

> a[2,2:4] # 2nd row, from 2nd to 4th columns

The command a[,] and a[] both choose all rows and all columns of 'a' and thus

are the same as typing 'a'. An array may also have 3 dimensions.

> b <- 1:24

> dim(b) <- c(3,4,2) # or b <- array(1:24, c(3,4,2))

> b

, , 1

[,1] [,2] [,3] [,4]

[1,] 1 4 7 10

[2,] 2 5 8 11

[3,] 3 6 9 12

, , 2

[,1] [,2] [,3] [,4]

[1,] 13 16 19 22

[2,] 14 17 20 23

[3,] 15 18 21 24

The first value of the dimension refers to the number of rows, followed by number

of columns and finally the number of strata.

Elements of this three-dimensional array can be extracted in a similar way.

> b[1:3,1:2,2]

[,1] [,2]

[1,] 13 16

[2,] 14 17

[3,] 15 18

In fact, an array can have much higher dimensions, but for most epidemiological

analysis are rarely used or needed.

27

Vector binding

Apart from folding a vector, an array can be created from vector binding, either by

column (using the function cbind) or by row (using the function rbind). Let's

return to our fruits vector.

> fruit <- c(5, 10, 1, 20)

Suppose a second person buys fruit but in different amounts to the first person.

> fruit2 <- c(1, 5, 3, 4)

To bind 'fruits' with 'fruits2', which are vectors of the same length, type:

> Col.fruit <- cbind(fruits, fruits2)

We can give names to the rows of this array:

> rownames(Col.fruit) <- c("orange","banana","durian","mango")

> Col.fruit

fruits fruits2

orange 5 1

banana 10 5

durian 1 3

mango 20 4

Alternatively, the binding can be done by row.

> Row.fruit <- rbind(fruits, fruits2)

> colnames(Col.fruit) <- c("orange","banana","durian","mango")

> Row.fruit

orange banana durian mango

fruits 5 10 1 20

fruits2 1 5 3 4

Transposition of an array

Array transposition means exchanging rows and columns of the array. In the above

example, 'Row.fruits' is a transposition of 'Col.fruits' and vice versa.

Array transposition is achieved using the t function.

> t(Col.fruit)

> t(Row.fruit)

Basic statistics of an array

The total number of fruits bought by both persons is obtained by:

> sum(Col.fruit)

The total number of bananas is obtained by:

> sum(Col.fruit[2,])

To obtain descriptive statistics of each buyer:

28

> summary(Col.fruit)

To obtain descriptive statistics of each kind of fruit:

> summary(Row.fruit)

Suppose fruits3 is created but with one more kind of fruit added:

> fruits3 <- c(20, 15, 3, 5, 8)

> cbind(Col.fruit, fruits3)

fruits fruits2 fruits3

orange 5 1 20

banana 10 5 15

durian 1 3 3

mango 20 4 5

Warning message:

number of rows of result is not a multiple of vector length

(arg 2) in: cbind(Col.fruit, fruits3)

Note that the last element of 'fruits3' is removed before being added.

> fruits4 <- c(1,2,3)

> cbind(Col.fruit, fruits4)

fruits fruits2 fruits4

orange 5 1 1

banana 10 5 2

durian 1 3 3

mango 20 4 1

Warning message:

number of rows of result is not a multiple of vector length

(arg 2) in: cbind(Col.fruit, fruits4)

Note that 'fruits4' is shorter than the length of the first vector argument. In this

situation R will automatically recycle the element of the shorter vector, inserting

the first element of 'fruits4' into the fourth row, with a warning.

String arrays

Similar to a vector, an array can consist of character string objects.

> Thais <- c("Somsri", "Daeng", "Somchai", "Veena")

> dim(Thais) <- c(2,2); Thais

[,1] [,2]

[1,] "Somsri" "Somchai"

[2,] "Daeng" "Veena"

Note that the elements are folded in colum-wise, not row-wise, sequence.

Implicit array of two vectors of equal length

Two vectors, especially with the same length, may refer to each other without

formal binding.

29

> cities <- c("Bangkok","Hat Yai","Chiang Mai")

> postcode <- c(10000, 90110, 50000)

> postcode[cities=="Bangkok"]

[1] 10000

This gives the same result as

> subset(postcode, cities=="Bangkok")

[1] 10000

For a single vector, thre are many ways to identify the order of a specific element.

For example, to find the index of "Hat Yai" in the city vector, the following four

commands all give the same result.

> (1:length(cities))[cities=="Hat Yai"]

> (1:3)[cities=="Hat Yai"]

> subset(1:3, cities=="Hat Yai")

> which(cities=="Hat Yai")

Note that when a character vector is binded with a numeric vector, the numeric

vector is coerced into a character vector, since all elements of an array must be of

the same type.

> cbind(cities,postcode)

cities postcode

[1,] "Bangkok" "10000"

[2,] "Hat Yai" "90110"

[3,] "Chiang Mai" "50000"

Matrices

A matrix is a two-dimensional array. It has several mathematical properties and

operations that are used behind statistical computations such as factor analysis,

generalized linear modelling and so on.

Users of statistical packages do not need to deal with matrices directly but some of

the results of the analyses are in matrix form, both displayed on the screen that can

readily be seen and hidden as a returned object that can be used later. For exercise

purposes, we will examine the covariance matrix, which is an object returned from

a regression analysis in a future chapter.

Tables

A table is an array emphasizing the relationship between values among cells.

Usually, a table is a result of an analysis, e.g. a cross-tabulation between to

categorical variables (using function table).

30

Suppose six patients who are male, female, female, male, female and female attend

a clinic. If the code is 1 for male and 2 for female, then to create this in R type:

> sex <- c(1,2,2,1,2,2)

Similarly, if we characterize the ages of the patients as being either young or old

and the first three patients are young, the next two are old and the last one is young,

and the codes for this age classification are 1 for young and 2 for old, then we can

create this in R by typing.

> age <- c(1,1,1,2,2,1)

Suppose also that these patients had one to six visits to the clinic, respectively.

> visits <- c(1,2,3,4,5,6)

> table1 <- table(sex, age); table1

age

sex 1 2

1 1 1

2 3 1

Note that table1 gives counts of each combination of the vectors sex and age

while 'table2' (below) gives the sum of the number of visits based on the four

different combinations of sex and age.

> table2 <- tapply(visits, list(Sex=sex, Age=age), FUN=sum)

> table2

Age

Sex 1 2

1 1 4

2 11 5

To obtain the mean of each combination type:

> tapply(visits, list(Sex=sex, Age=age), FUN=mean)

Age

Sex 1 2

1 1.000 4

2 3.667 5

Although 'table1' has class table, the class of 'table2' is still a matrix. One can

convert it simply using the function as.table.

> table2 <- as.table(table2)

Summary of table vs summary of array

In R, applying the function summary to a table performs a chi squared test of

independence.

31

> summary(table1)

Number of cases in table: 6

Number of factors: 2

Test for independence of all factors:

Chisq = 0.375, df = 1, p-value = 0.5403

Chi-squared approximation may be incorrect

In contrast, applying summary to a non-table array produces descriptive statistics

of each column.

> is.table(Col.fruits)

[1] FALSE

> summary(Col.fruits)

fruits fruits2

Min. : 1.0 Min. :1.00

1st Qu.: 4.0 1st Qu.:2.50

Median : 7.5 Median :3.50

Mean : 9.0 Mean :3.25

3rd Qu.:12.5 3rd Qu.:4.25

Max. :20.0 Max. :5.00

> fruits.table <- as.table(Col.fruits)

> summary(fruits.table)

Number of cases in table: 49

Number of factors: 2

Test for independence of all factors:

Chisq = 6.675, df = 3, p-value = 0.08302

Chi-squared approximation may be incorrect

> fisher.test(fruits.table)

Fisher's Exact Test for Count Data

data: fruits.table

p-value = 0.07728

alternative hypothesis: two.sided

Lists

An array forces all cells from different columns and rows to be the same type. If

any cell is a character then all cells will be coerced into a character. A list is

different. It can be a mixture of different types of objects compounded into one

entity. It can be a mixture of vectors, arrays, tables or any object type.

> list1 <- list(a=1, b=fruits, c=cities)

> list1

$a

[1] 1

$b

[1] 5 10 1 20

$c

[1] "Bangkok" "Hat Yai" "Chiang Mai"

32

Note that the arguments of the function list consist of a series of new objects

being assigned a value from existing objects or values. When properly displayed,

each new name is prefixed with a dollar sign, $.

The creation of a list is not a common task in ordinary data analysis. However, a list

is sometimes required in the arguments to some functions.

Removing objects from the computer memory also requires a list as the argument to

the function rm.

> rm(list=c("list1", "fruits"))

This is equivalent to

> rm(list1); rm(fruits)

A list may also be returned from the results of an analysis, but appears under a

special class.

> sample1 <- rnorm(10)

This generates a sample of 10 numbers from a normal distribution.

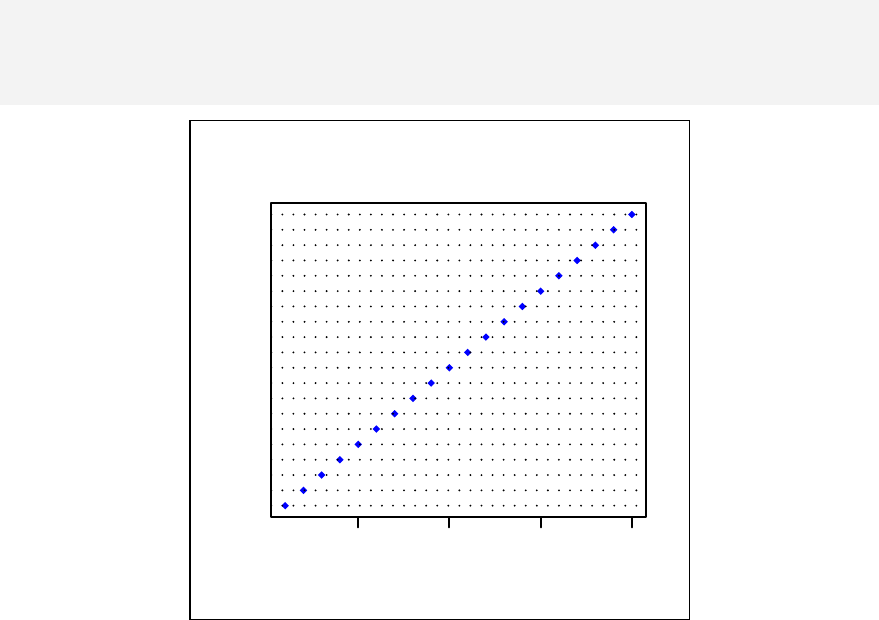

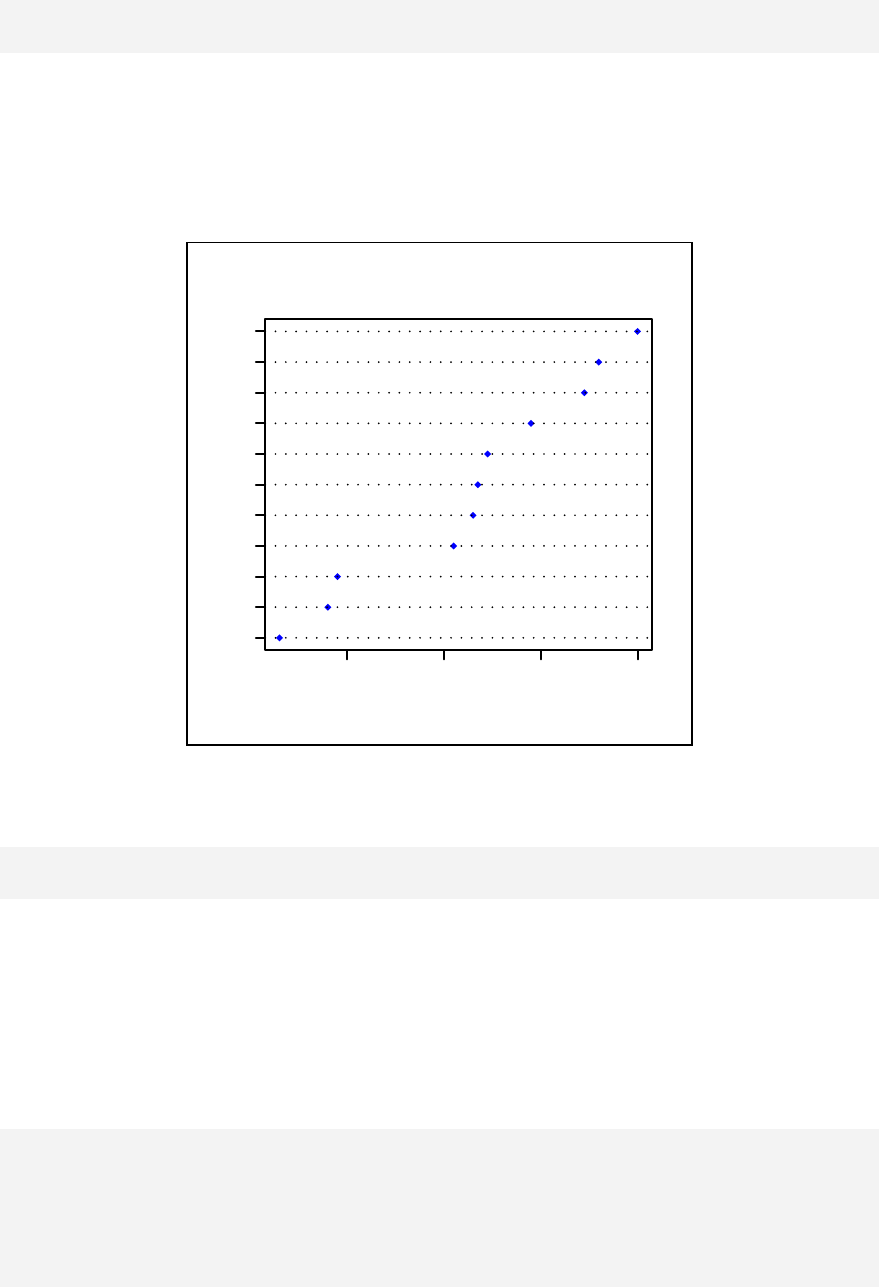

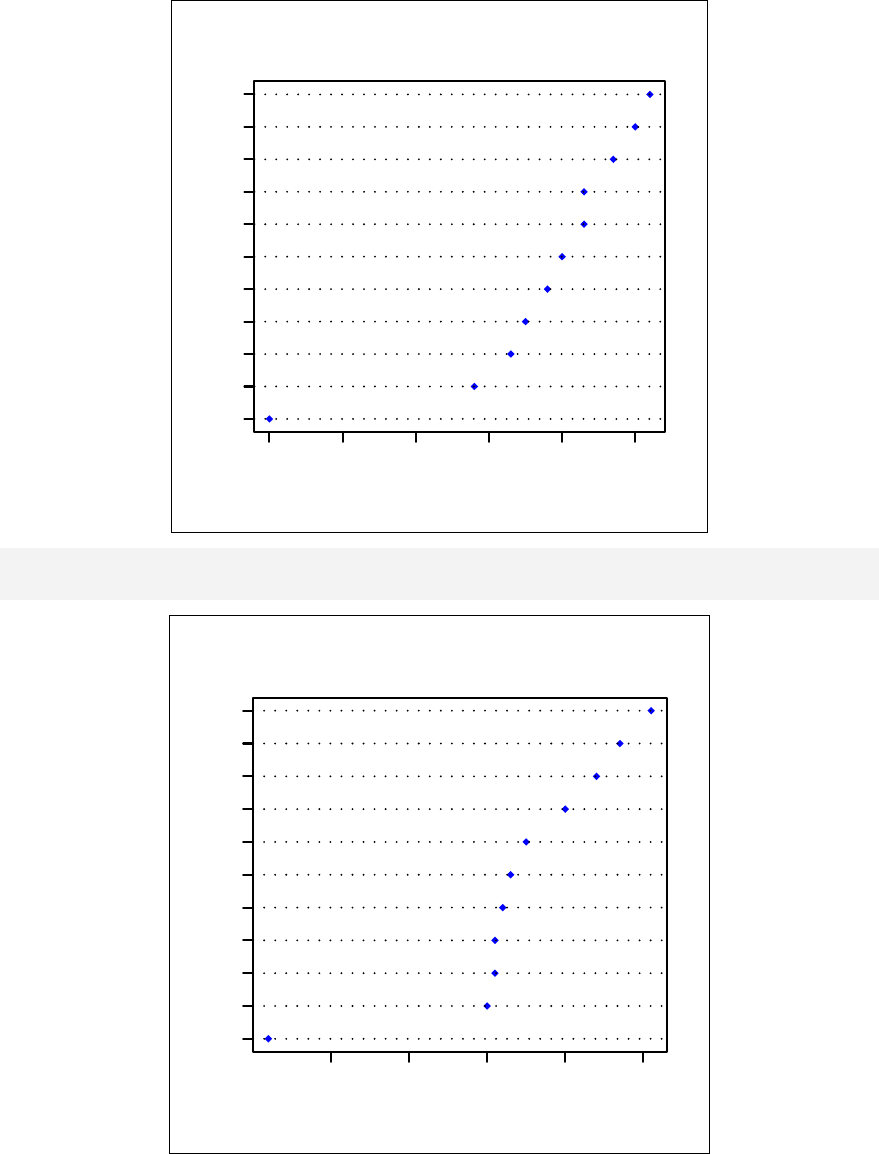

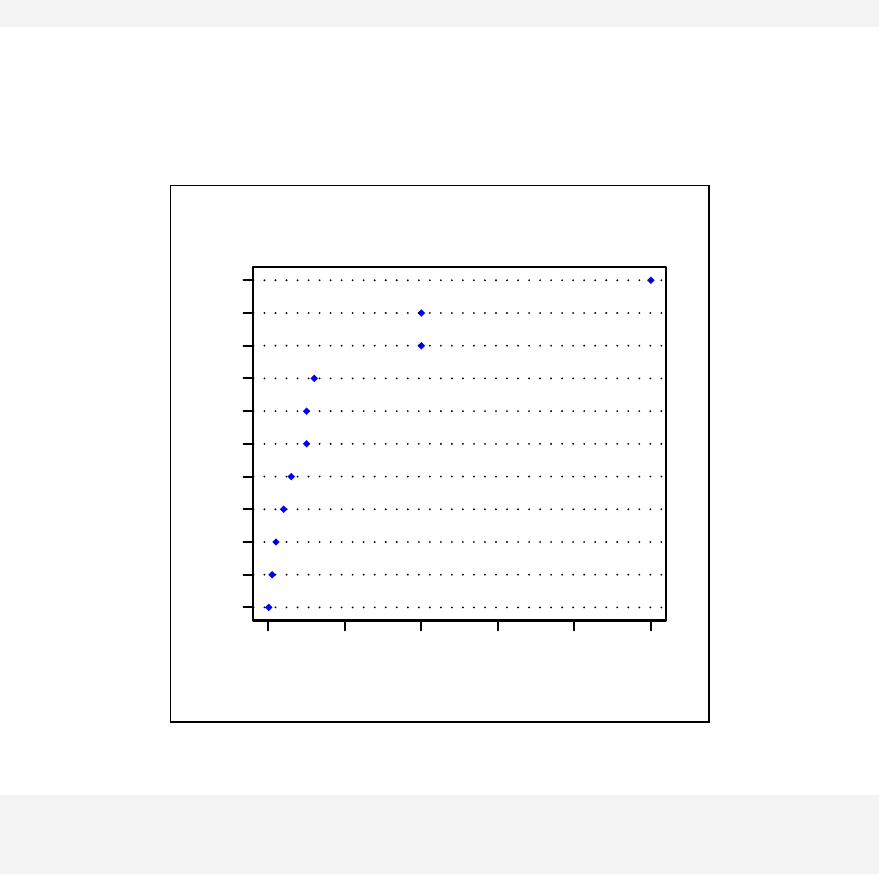

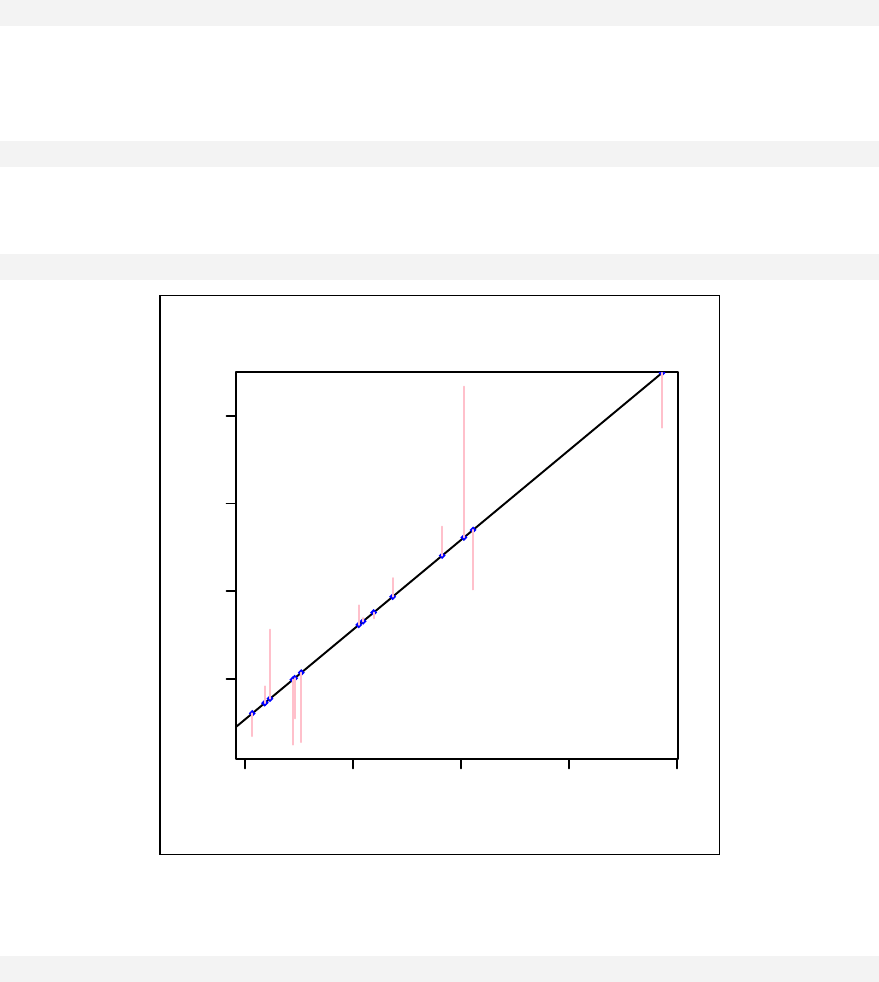

> qqnorm(sample1)

The qqnorm function plots the sample quantiles, or the sorted observed values,

against the theoretical quantiles, or the corresponding expected values if the data

were perfectly normally distributed. It is used here for the sake of demonstration of

the list function only.

> list2 <- qqnorm(sample1)

This stores the results into an object called list2.

> list2

$x

[1] 0.123 -1.547 -0.375 0.655 1.000 0.375 -0.123

[8] -1.000 -0.655 1.547

$y

[1] -0.4772 -0.9984 -0.7763 0.0645 0.9595 -0.1103

[7] -0.5110 -0.9112 -0.8372 2.4158

The command qqnorm(sample1) is used as a graphical method for checking

normality. While it produces a graph on the screen, it also gives a list of the x and y

coordinates, which can be saved and used for further calculation.

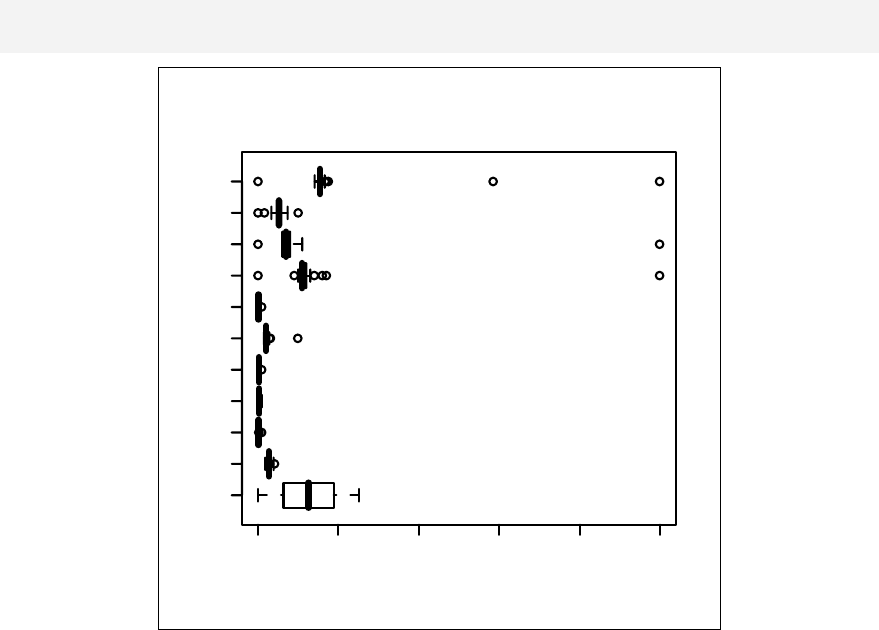

Similarly, boxplot(sample1) returns another list of objects to facilitate

plotting of a boxplot.

33

Exercises

Problem 1.

Demonstrate a few simple ways to create the array below

[,1][,2][,3][,4][,5][,6][,7][,8][,9][,10]

[1,] 1 2 3 4 5 6 7 8 9 10

[2,] 11 12 13 14 15 16 7 18 19 20

Problem 2.

Extract from the above array the odd numbered columns.

Problem 3.

Cross-tabulation between status of a disease and a putative exposure have the

following results:

Diseased Non-diseased

Exposed 15 20

Non-exposed 30 22

Create the table in R and perform chi-squared and Fisher's exact tests.

34

35

Chapter 4: Data Frames

In the preceding chapter, examples were given on arrays and lists. In this chapter,

data frames will be the main focus. For most researchers, these are sometimes

called datasets. However, a complete dataset can contain more than one data frame.

These contain the real data that most researchers have to work with.

Comparison of arrays and data frames

Many rules used for arrays are also applicable to data frames. For example, the

main structure of a data frame consists of columns (or variables) and rows (or

records). Rules for subscripting, column or row binding and selection of a subset in

arrays are directly applicable to data frames.

Data frames are however slightly more complicated than arrays. All columns in an

array are forced to be character if just one cell is a character. A data frame, on the

other hand, can have different classes of columns. For example, a data frame can

consist of a column of 'idnumber', which is numeric and a column of 'name', which