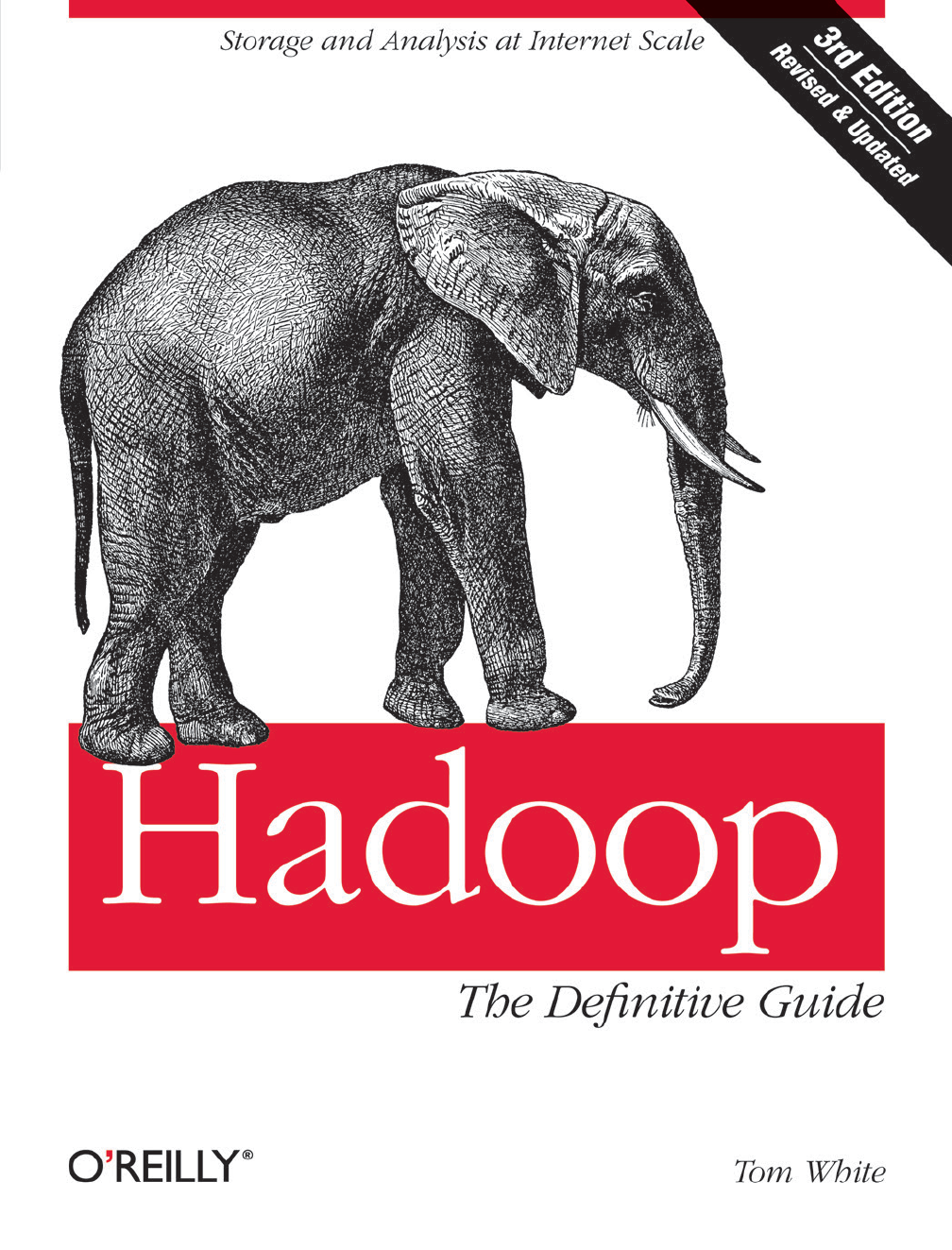

Hadoop The Definitive Guide 3rd Edition Orielly May 2012

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 686 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Table of Contents

- Foreword

- Preface

- Chapter 1. Meet Hadoop

- Chapter 2. MapReduce

- Chapter 3. The Hadoop Distributed Filesystem

- Chapter 4. Hadoop I/O

- Chapter 5. Developing a MapReduce Application

- Chapter 6. How MapReduce Works

- Chapter 7. MapReduce Types and Formats

- Chapter 8. MapReduce Features

- Chapter 9. Setting Up a Hadoop Cluster

- Cluster Specification

- Cluster Setup and Installation

- SSH Configuration

- Hadoop Configuration

- YARN Configuration

- Security

- Benchmarking a Hadoop Cluster

- Hadoop in the Cloud

- Chapter 10. Administering Hadoop

- Chapter 11. Pig

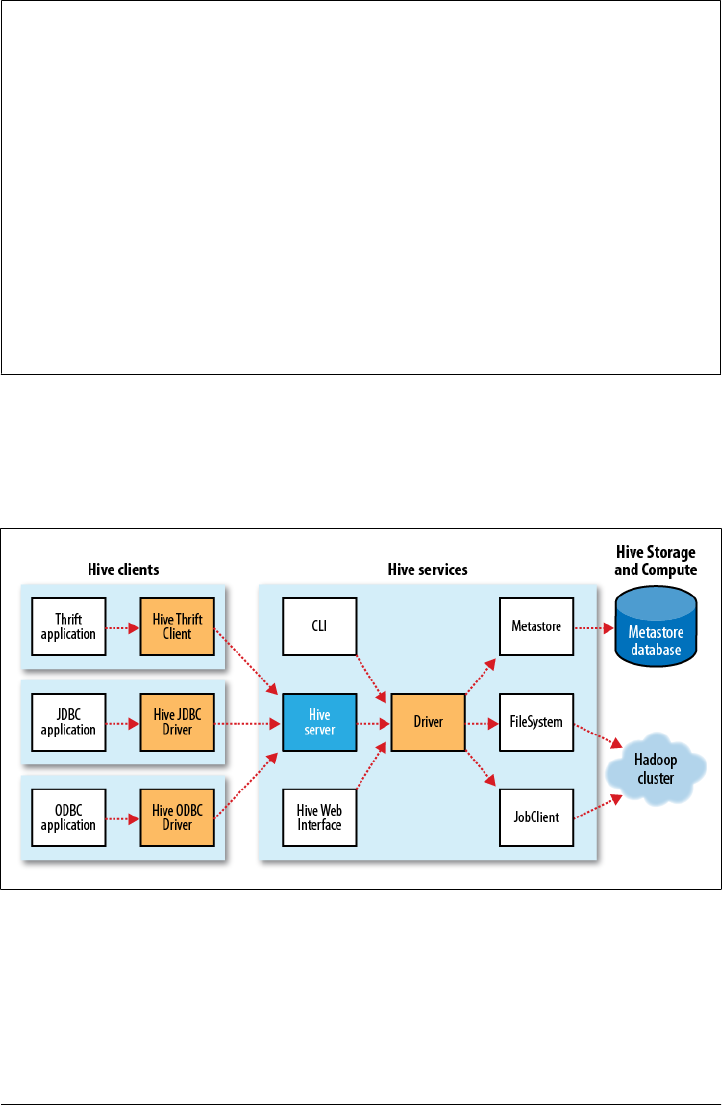

- Chapter 12. Hive

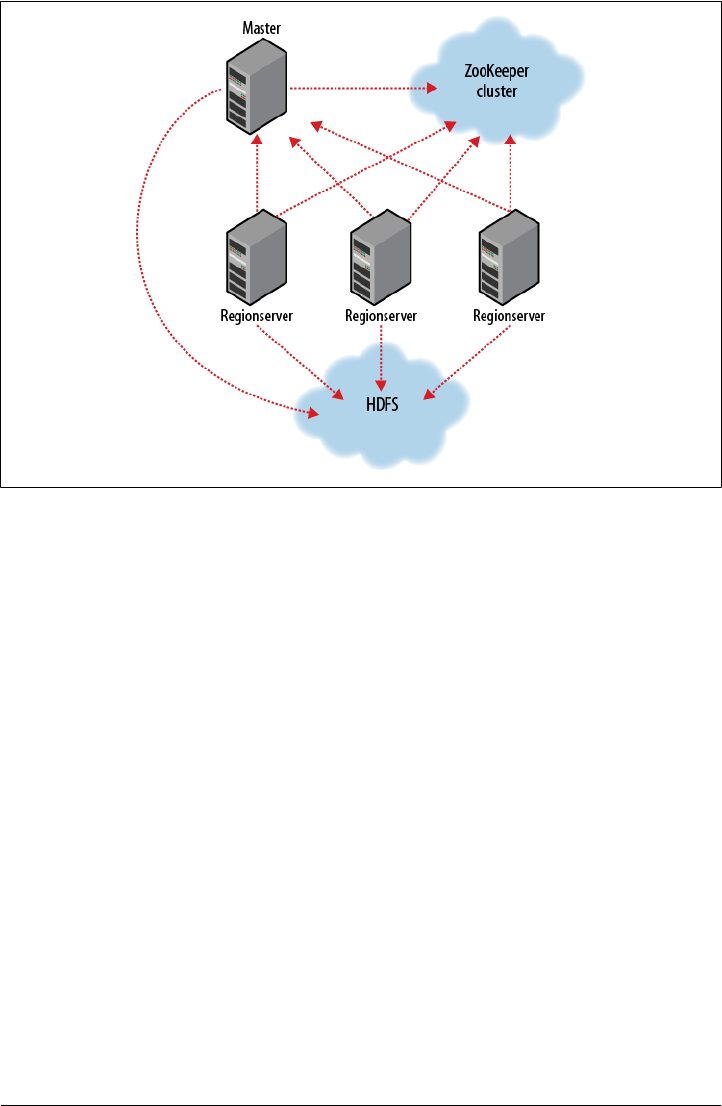

- Chapter 13. HBase

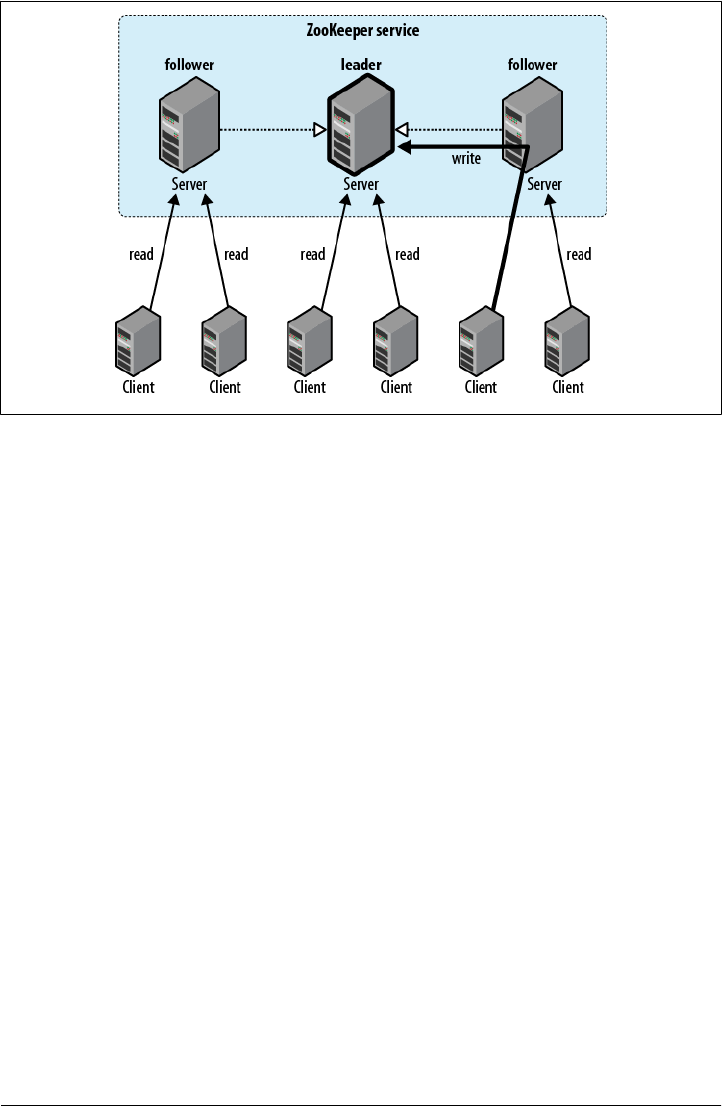

- Chapter 14. ZooKeeper

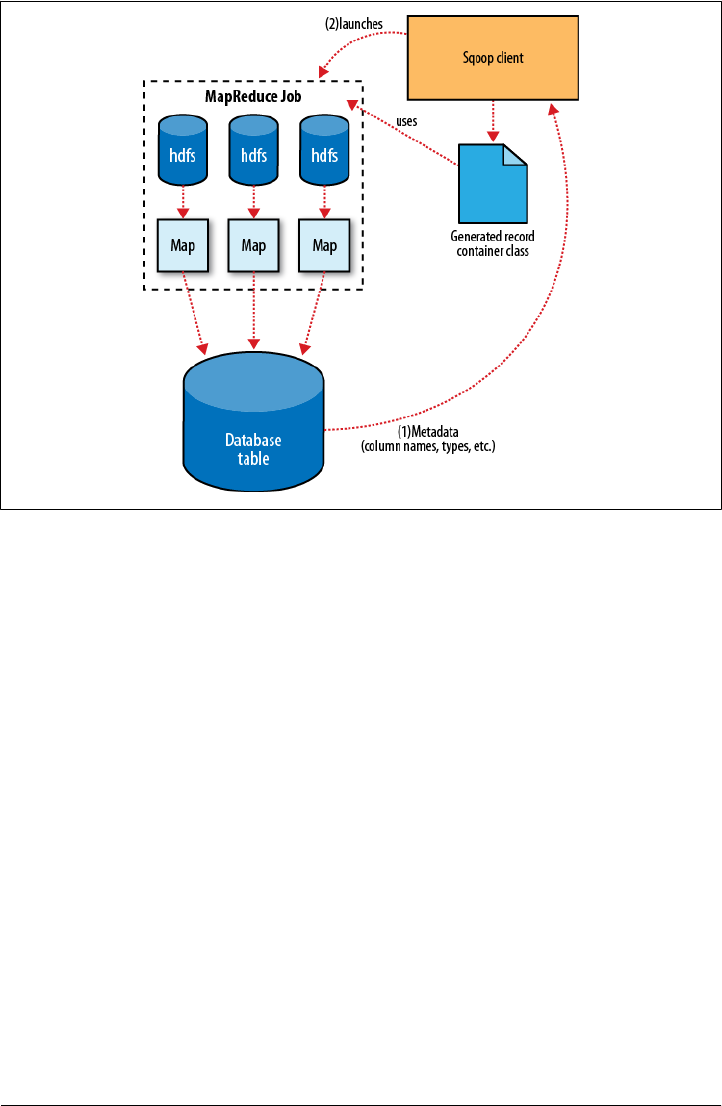

- Chapter 15. Sqoop

- Chapter 16. Case Studies

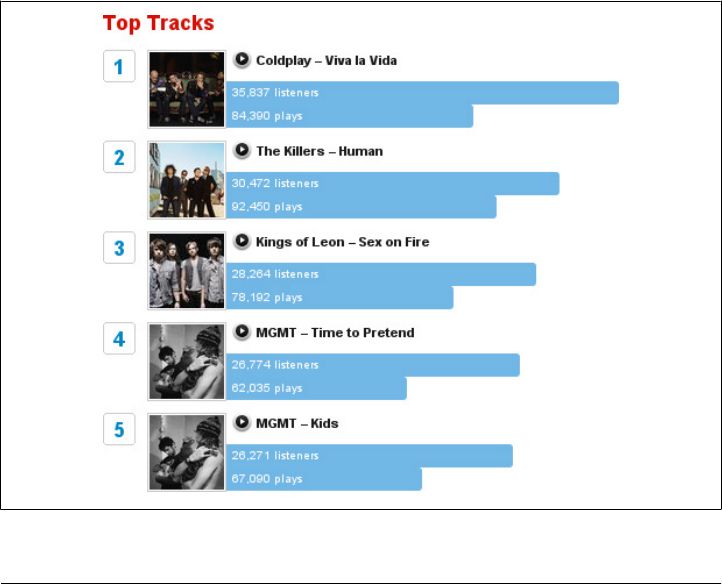

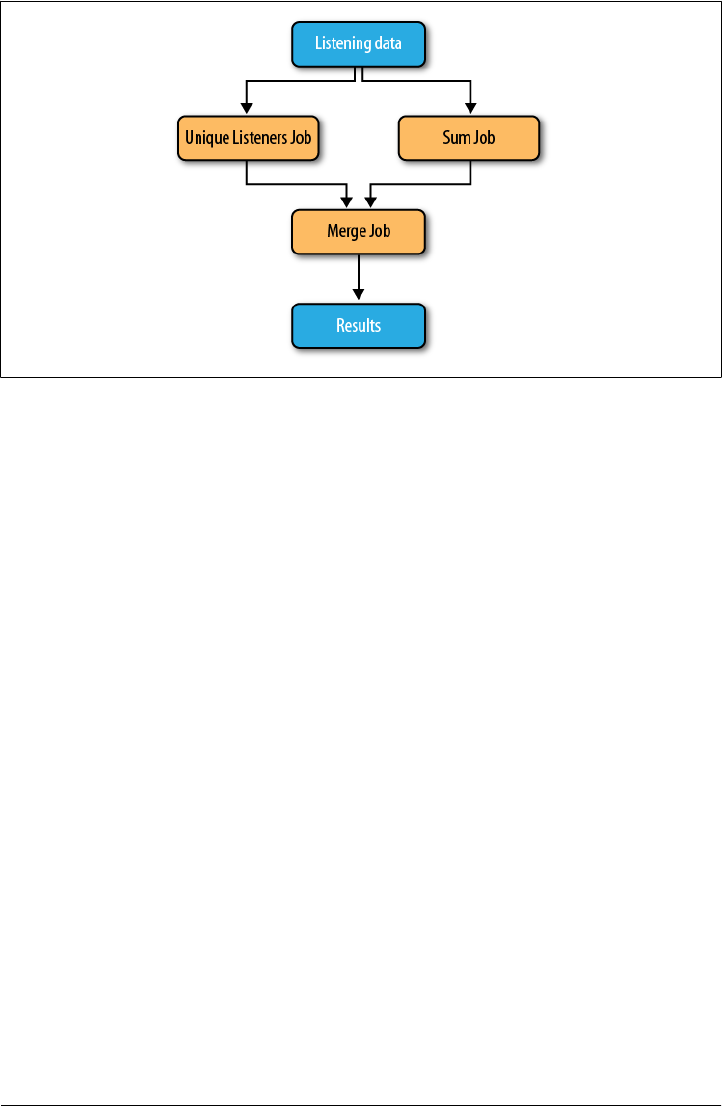

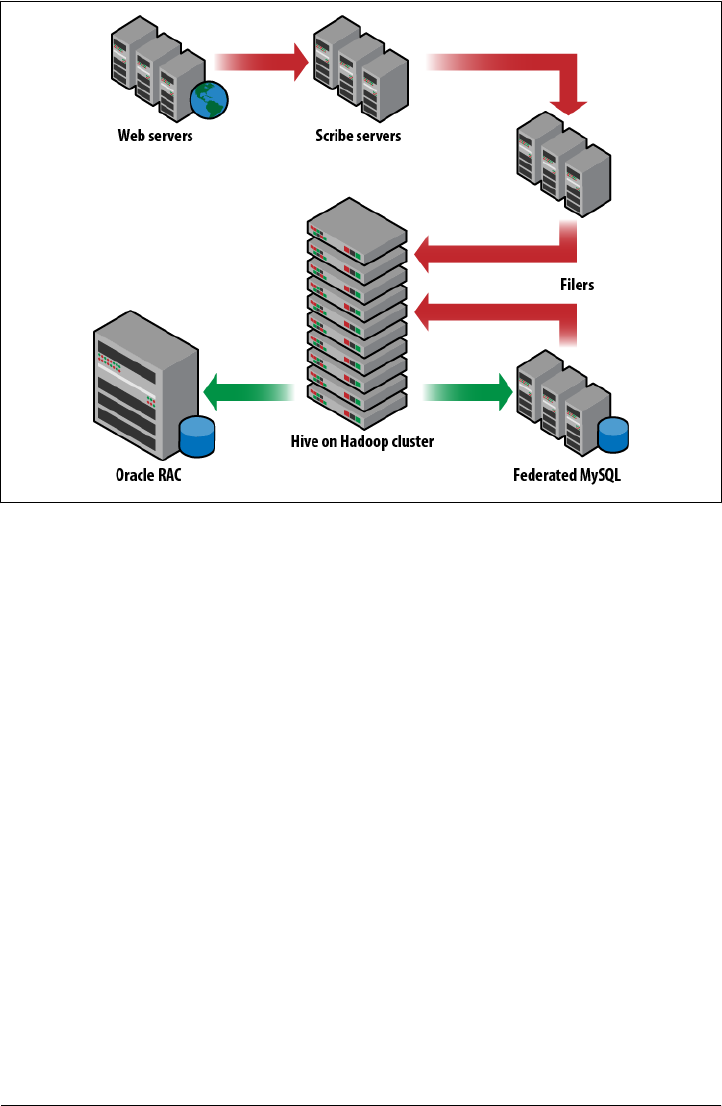

- Hadoop Usage at Last.fm

- Hadoop and Hive at Facebook

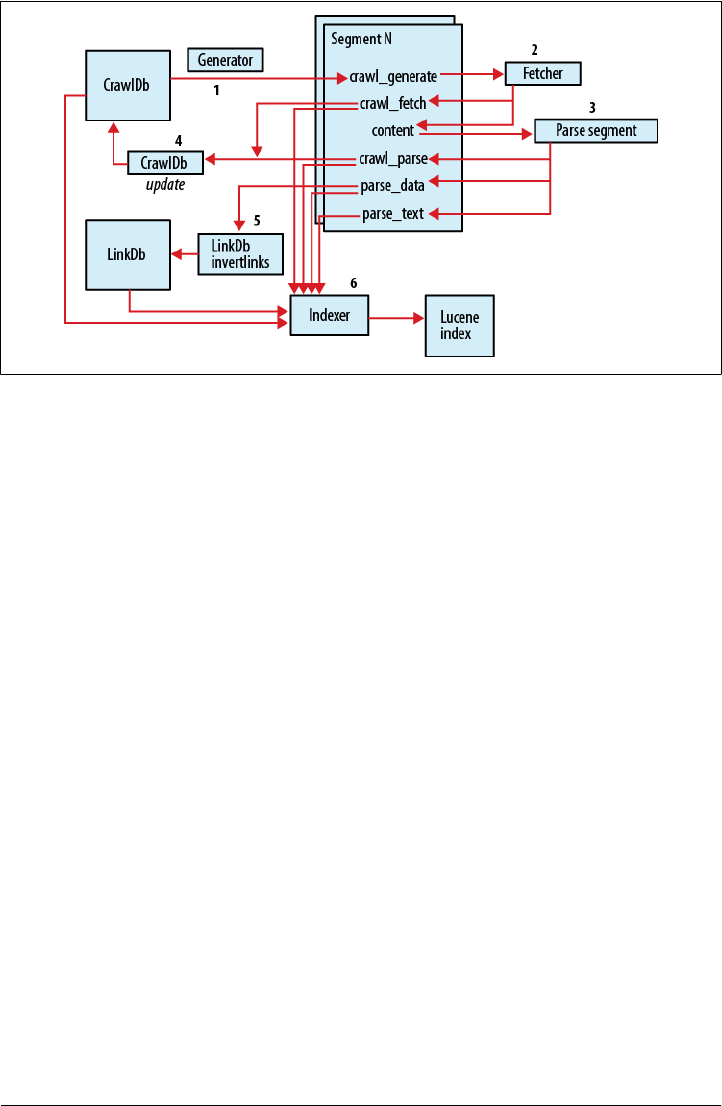

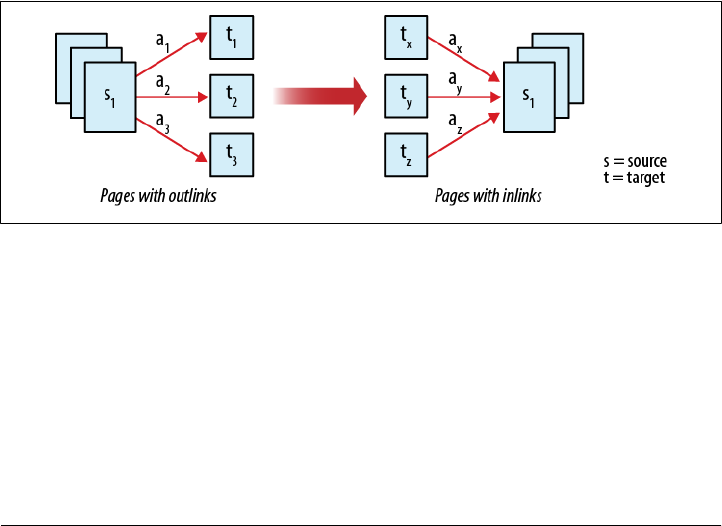

- Nutch Search Engine

- Log Processing at Rackspace

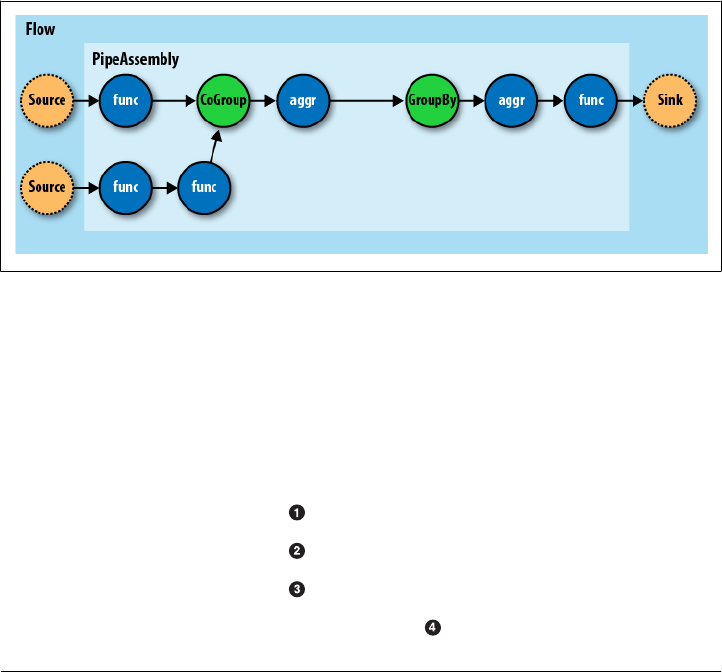

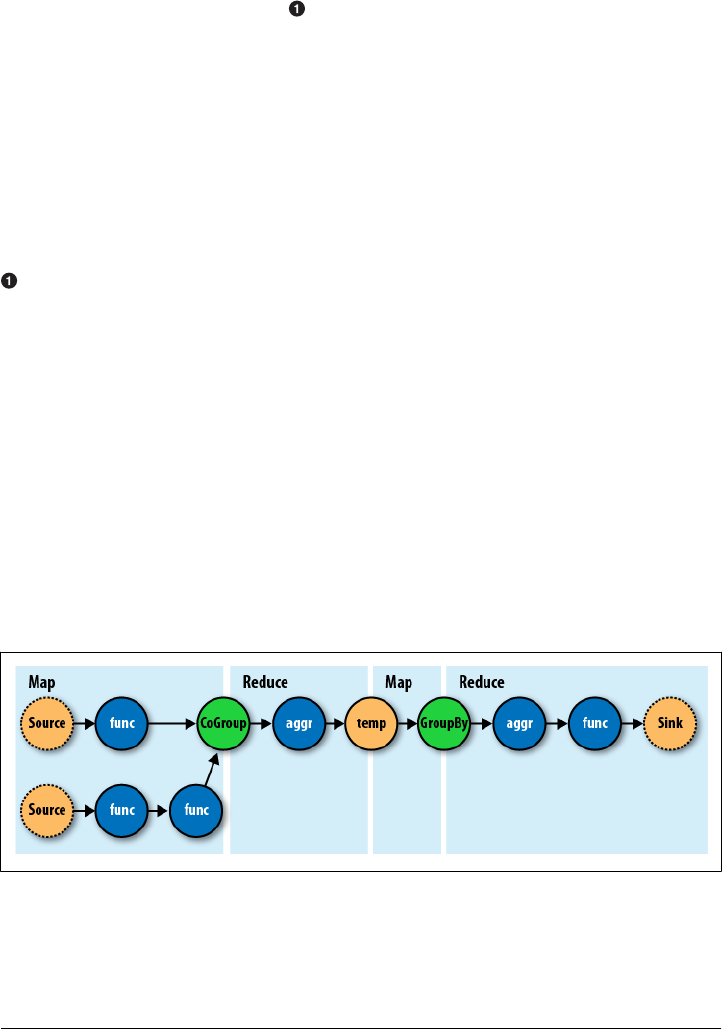

- Cascading

- TeraByte Sort on Apache Hadoop

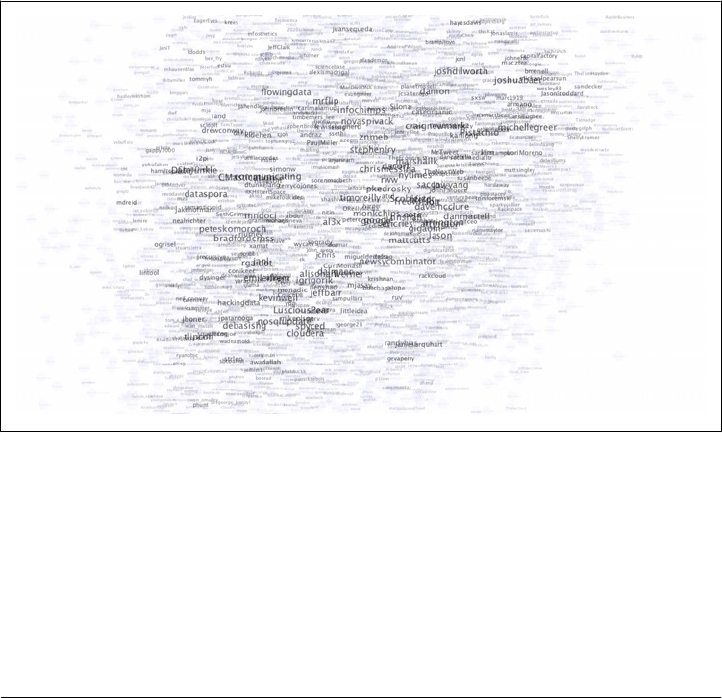

- Using Pig and Wukong to Explore Billion-edge Network Graphs

- Appendix A. Installing Apache Hadoop

- Appendix B. Cloudera’s Distribution Including Apache Hadoop

- Appendix C. Preparing the NCDC Weather Data

- Index

THIRD EDITION

Hadoop: The Definitive Guide

Beijing

•

Cambridge

•

Farnham

•

Köln

•

Sebastopol

•

Tokyo

Hadoop: The Definitive Guide, Third Edition

Editors:

Production Editor:

Copyeditor:

Proofreader:

Indexer:

Cover Designer:

Interior Designer:

Illustrator:

Revision History for the Third Edition:

Table of Contents

Foreword . .................................................................. xv

Preface .................................................................... xvii

1. Meet Hadoop ........................................................... 1

2. MapReduce ........................................................... 17

v

3. The Hadoop Distributed Filesystem . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

4. Hadoop I/O ........................................................... 81

vi | Table of Contents

5. Developing a MapReduce Application . ................................... 143

Table of Contents | vii

6. How MapReduce Works ................................................ 189

7. MapReduce Types and Formats .......................................... 223

8. MapReduce Features .................................................. 259

viii | Table of Contents

9. Setting Up a Hadoop Cluster ............................................ 297

Table of Contents | ix

10. Administering Hadoop ................................................. 339

11. Pig . ................................................................ 367

x | Table of Contents

12. Hive ................................................................ 413

13. HBase ............................................................... 459

Table of Contents | xi

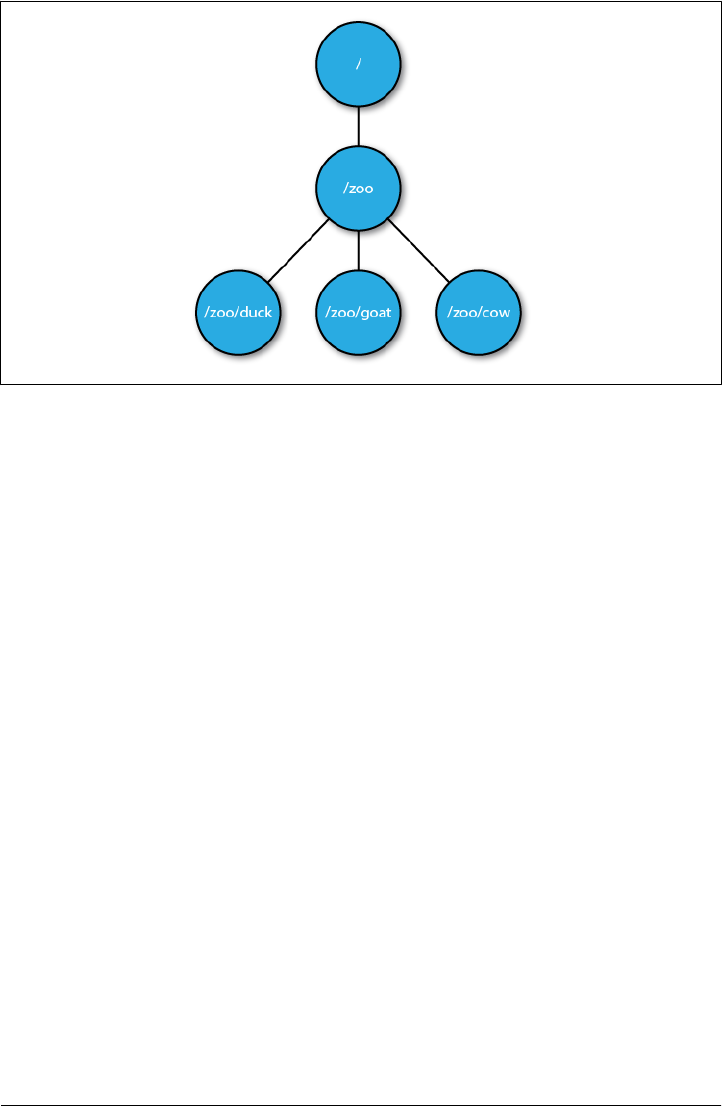

14. ZooKeeper . .......................................................... 489

xii | Table of Contents

15. Sqoop ............................................................... 527

16. Case Studies . ........................................................ 547

Table of Contents | xiii

A. Installing Apache Hadoop .............................................. 617

B. Cloudera’s Distribution Including Apache Hadoop . . . . . . . . . . . . . . . . . . . . . . . . . . 623

C. Preparing the NCDC Weather Data . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 625

Index ..................................................................... 629

xiv | Table of Contents

Foreword

xv

xvi | Foreword

Preface

xvii

Administrative Notes

import org.apache.hadoop.io.*

What’s in This Book?

xviii | Preface

What’s New in the Second Edition?

What’s New in the Third Edition?

Preface | xix

Conventions Used in This Book

Constant width

Constant width bold

Constant width italic

Using Code Examples

xx | Preface

Safari® Books Online

How to Contact Us

Preface | xxi

Acknowledgments

xxii | Preface

Preface | xxiii

CHAPTER 1

Meet Hadoop

Data!

1

2 | Chapter 1:Meet Hadoop

Data Storage and Analysis

Data Storage and Analysis | 3

Comparison with Other Systems

Rational Database Management System

4 | Chapter 1:Meet Hadoop

Traditional RDBMS MapReduce

Data size Gigabytes Petabytes

Access Interactive and batch Batch

Updates Read and write many times Write once, read many times

Structure Static schema Dynamic schema

Integrity High Low

Scaling Nonlinear Linear

Comparison with Other Systems | 5

Grid Computing

6 | Chapter 1:Meet Hadoop

Comparison with Other Systems | 7

Volunteer Computing

8 | Chapter 1:Meet Hadoop

A Brief History of Hadoop

The Origin of the Name “Hadoop”

JobTracker

A Brief History of Hadoop | 9

10 | Chapter 1:Meet Hadoop

Hadoop at Yahoo!

A Brief History of Hadoop | 11

Apache Hadoop and the Hadoop Ecosystem

12 | Chapter 1:Meet Hadoop

Hadoop Releases

Hadoop Releases | 13

Feature 1.x 0.22 2.x

Secure authentication Yes No Yes

Old configuration names Yes Deprecated Deprecated

New configuration names No Yes Yes

Old MapReduce API Yes Yes Yes

New MapReduce API Yes (with some

missing libraries)

Yes Yes

MapReduce 1 runtime (Classic) Yes Yes No

MapReduce 2 runtime (YARN) No No Yes

HDFS federation No No Yes

HDFS high-availability No No Yes

14 | Chapter 1:Meet Hadoop

What’s Covered in This Book

Configuration names

dfs.namenode

dfs.name.dirdfs.namenode.name.dir

mapreducemapredmapred.job.name

mapreduce.job.name

MapReduce APIs

oldapi

Compatibility

Hadoop Releases | 15

InterfaceStability.Stable

InterfaceStabil

ity.EvolvingInterfaceStability.Unstable

org.apache.hadoop.classification

16 | Chapter 1:Meet Hadoop

CHAPTER 2

MapReduce

A Weather Dataset

Data Format

17

0057

332130 # USAF weather station identifier

99999 # WBAN weather station identifier

19500101 # observation date

0300 # observation time

4

+51317 # latitude (degrees x 1000)

+028783 # longitude (degrees x 1000)

FM-12

+0171 # elevation (meters)

99999

V020

320 # wind direction (degrees)

1 # quality code

N

0072

1

00450 # sky ceiling height (meters)

1 # quality code

C

N

010000 # visibility distance (meters)

1 # quality code

N

9

-0128 # air temperature (degrees Celsius x 10)

1 # quality code

-0139 # dew point temperature (degrees Celsius x 10)

1 # quality code

10268 # atmospheric pressure (hectopascals x 10)

1 # quality code

% ls raw/1990 | head

010010-99999-1990.gz

010014-99999-1990.gz

010015-99999-1990.gz

010016-99999-1990.gz

010017-99999-1990.gz

010030-99999-1990.gz

010040-99999-1990.gz

010080-99999-1990.gz

010100-99999-1990.gz

010150-99999-1990.gz

18 | Chapter 2:MapReduce

Analyzing the Data with Unix Tools

#!/usr/bin/env bash

for year in all/*

do

echo -ne `basename $year .gz`"\t"

gunzip -c $year | \

awk '{ temp = substr($0, 88, 5) + 0;

q = substr($0, 93, 1);

if (temp !=9999 && q ~ /[01459]/ && temp > max) max = temp }

END { print max }'

done

END

% ./max_temperature.sh

1901 317

1902 244

1903 289

1904 256

1905 283

...

Analyzing the Data with Unix Tools | 19

Analyzing the Data with Hadoop

Map and Reduce

20 | Chapter 2:MapReduce

0067011990999991950051507004...9999999N9+00001+99999999999...

0043011990999991950051512004...9999999N9+00221+99999999999...

0043011990999991950051518004...9999999N9-00111+99999999999...

0043012650999991949032412004...0500001N9+01111+99999999999...

0043012650999991949032418004...0500001N9+00781+99999999999...

(0, 0067011990999991950051507004...9999999N9+00001+99999999999...)

(106, 0043011990999991950051512004...9999999N9+00221+99999999999...)

(212, 0043011990999991950051518004...9999999N9-00111+99999999999...)

(318, 0043012650999991949032412004...0500001N9+01111+99999999999...)

(424, 0043012650999991949032418004...0500001N9+00781+99999999999...)

(1950, 0)

(1950, 22)

(1950, −11)

(1949, 111)

(1949, 78)

(1949, [111, 78])

(1950, [0, 22, −11])

(1949, 111)

(1950, 22)

Analyzing the Data with Hadoop | 21

Java MapReduce

Mapper

map()

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class MaxTemperatureMapper

extends Mapper<LongWritable, Text, Text, IntWritable> {

private static final int MISSING = 9999;

@Override

public void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

String line = value.toString();

String year = line.substring(15, 19);

int airTemperature;

if (line.charAt(87) == '+') { // parseInt doesn't like leading plus signs

airTemperature = Integer.parseInt(line.substring(88, 92));

} else {

airTemperature = Integer.parseInt(line.substring(87, 92));

}

String quality = line.substring(92, 93);

if (airTemperature != MISSING && quality.matches("[01459]")) {

context.write(new Text(year), new IntWritable(airTemperature));

}

}

}

Mapper

22 | Chapter 2:MapReduce

org.apache.hadoop.io

LongWritableLongTextString

IntWritableInteger

map()Text

Stringsubstring()

map()Context

Text

IntWritable

Reducer

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class MaxTemperatureReducer

extends Reducer<Text, IntWritable, Text, IntWritable> {

@Override

public void reduce(Text key, Iterable<IntWritable> values,

Context context)

throws IOException, InterruptedException {

int maxValue = Integer.MIN_VALUE;

for (IntWritable value : values) {

maxValue = Math.max(maxValue, value.get());

}

context.write(key, new IntWritable(maxValue));

}

}

TextIntWritable

TextIntWritable

Analyzing the Data with Hadoop | 23

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class MaxTemperature {

public static void main(String[] args) throws Exception {

if (args.length != 2) {

System.err.println("Usage: MaxTemperature <input path> <output path>");

System.exit(-1);

}

Job job = new Job();

job.setJarByClass(MaxTemperature.class);

job.setJobName("Max temperature");

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.setMapperClass(MaxTemperatureMapper.class);

job.setReducerClass(MaxTemperatureReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

Job

JobsetJarByClass()

Job

addInputPath()FileInputFormat

addInputPath()

setOutput

Path()FileOutputFormat

24 | Chapter 2:MapReduce

setMapperClass()

setReducerClass()

setOutputKeyClass()setOutputValueClass()

setMapOutputKeyClass()setMapOutputValueClass()

TextInputFormat

waitForCompletion()Job

waitForCompletion()

truefalse01

A test run

% export HADOOP_CLASSPATH=hadoop-examples.jar

% hadoop MaxTemperature input/ncdc/sample.txt output

12/02/04 11:50:41 WARN util.NativeCodeLoader: Unable to load native-hadoop library

for your platform... using builtin-java classes where applicable

12/02/04 11:50:41 WARN mapred.JobClient: Use GenericOptionsParser for parsing the

arguments. Applications should implement Tool for the same.

12/02/04 11:50:41 INFO input.FileInputFormat: Total input paths to process : 1

12/02/04 11:50:41 INFO mapred.JobClient: Running job: job_local_0001

12/02/04 11:50:41 INFO mapred.Task: Using ResourceCalculatorPlugin : null

12/02/04 11:50:41 INFO mapred.MapTask: io.sort.mb = 100

12/02/04 11:50:42 INFO mapred.MapTask: data buffer = 79691776/99614720

12/02/04 11:50:42 INFO mapred.MapTask: record buffer = 262144/327680

12/02/04 11:50:42 INFO mapred.MapTask: Starting flush of map output

12/02/04 11:50:42 INFO mapred.MapTask: Finished spill 0

12/02/04 11:50:42 INFO mapred.Task: Task:attempt_local_0001_m_000000_0 is done. And i

s in the process of commiting

12/02/04 11:50:42 INFO mapred.JobClient: map 0% reduce 0%

12/02/04 11:50:44 INFO mapred.LocalJobRunner:

12/02/04 11:50:44 INFO mapred.Task: Task 'attempt_local_0001_m_000000_0' done.

Analyzing the Data with Hadoop | 25

12/02/04 11:50:44 INFO mapred.Task: Using ResourceCalculatorPlugin : null

12/02/04 11:50:44 INFO mapred.LocalJobRunner:

12/02/04 11:50:44 INFO mapred.Merger: Merging 1 sorted segments

12/02/04 11:50:44 INFO mapred.Merger: Down to the last merge-pass, with 1 segments

left of total size: 57 bytes

12/02/04 11:50:44 INFO mapred.LocalJobRunner:

12/02/04 11:50:45 INFO mapred.Task: Task:attempt_local_0001_r_000000_0 is done. And

is in the process of commiting

12/02/04 11:50:45 INFO mapred.LocalJobRunner:

12/02/04 11:50:45 INFO mapred.Task: Task attempt_local_0001_r_000000_0 is allowed to

commit now

12/02/04 11:50:45 INFO output.FileOutputCommitter: Saved output of task 'attempt_local

_0001_r_000000_0' to output

12/02/04 11:50:45 INFO mapred.JobClient: map 100% reduce 0%

12/02/04 11:50:47 INFO mapred.LocalJobRunner: reduce > reduce

12/02/04 11:50:47 INFO mapred.Task: Task 'attempt_local_0001_r_000000_0' done.

12/02/04 11:50:48 INFO mapred.JobClient: map 100% reduce 100%

12/02/04 11:50:48 INFO mapred.JobClient: Job complete: job_local_0001

12/02/04 11:50:48 INFO mapred.JobClient: Counters: 17

12/02/04 11:50:48 INFO mapred.JobClient: File Output Format Counters

12/02/04 11:50:48 INFO mapred.JobClient: Bytes Written=29

12/02/04 11:50:48 INFO mapred.JobClient: FileSystemCounters

12/02/04 11:50:48 INFO mapred.JobClient: FILE_BYTES_READ=357503

12/02/04 11:50:48 INFO mapred.JobClient: FILE_BYTES_WRITTEN=425817

12/02/04 11:50:48 INFO mapred.JobClient: File Input Format Counters

12/02/04 11:50:48 INFO mapred.JobClient: Bytes Read=529

12/02/04 11:50:48 INFO mapred.JobClient: Map-Reduce Framework

12/02/04 11:50:48 INFO mapred.JobClient: Map output materialized bytes=61

12/02/04 11:50:48 INFO mapred.JobClient: Map input records=5

12/02/04 11:50:48 INFO mapred.JobClient: Reduce shuffle bytes=0

12/02/04 11:50:48 INFO mapred.JobClient: Spilled Records=10

12/02/04 11:50:48 INFO mapred.JobClient: Map output bytes=45

12/02/04 11:50:48 INFO mapred.JobClient: Total committed heap usage (bytes)=36923

8016

12/02/04 11:50:48 INFO mapred.JobClient: SPLIT_RAW_BYTES=129

12/02/04 11:50:48 INFO mapred.JobClient: Combine input records=0

12/02/04 11:50:48 INFO mapred.JobClient: Reduce input records=5

12/02/04 11:50:48 INFO mapred.JobClient: Reduce input groups=2

12/02/04 11:50:48 INFO mapred.JobClient: Combine output records=0

12/02/04 11:50:48 INFO mapred.JobClient: Reduce output records=2

12/02/04 11:50:48 INFO mapred.JobClient: Map output records=5

hadoop

hadoopjava

HADOOP_CLASSPATHhadoop

HADOOP_CLASSPATH

26 | Chapter 2:MapReduce

job_local_0001

attempt_local_0001_m_000000_0

attempt_local_0001_r_000000_0

% cat output/part-r-00000

1949 111

1950 22

The old and the new Java MapReduce APIs

org.apache.hadoop.mapreduce.lib

MapperReducer

Analyzing the Data with Hadoop | 27

org.apache.hadoop.mapreduce

org.apache.hadoop.mapred

Context

JobConfOutputCollectorReporter

run()

MapRunnable

Job

JobClient

JobConf

Configuration

Configuration

Job

nnnnn

nnnnnnnnnnnnnnn

java.lang.Inter

ruptedException

reduce()java.lang.Iterable

java.lang.Iterator

for (VALUEIN value : values) { ... }

MaxTemperature

28 | Chapter 2:MapReduce

MapperReducer

map()reduce()

Mapper

Reducer

map()reduce()

map()reduce()@Override

public class OldMaxTemperature {

static class OldMaxTemperatureMapper extends MapReduceBase

implements Mapper<LongWritable, Text, Text, IntWritable> {

private static final int MISSING = 9999;

@Override

public void map(LongWritable key, Text value,

OutputCollector<Text, IntWritable> output, Reporter reporter)

throws IOException {

String line = value.toString();

String year = line.substring(15, 19);

int airTemperature;

if (line.charAt(87) == '+') { // parseInt doesn't like leading plus signs

airTemperature = Integer.parseInt(line.substring(88, 92));

} else {

airTemperature = Integer.parseInt(line.substring(87, 92));

}

String quality = line.substring(92, 93);

if (airTemperature != MISSING && quality.matches("[01459]")) {

output.collect(new Text(year), new IntWritable(airTemperature));

}

}

}

static class OldMaxTemperatureReducer extends MapReduceBase

implements Reducer<Text, IntWritable, Text, IntWritable> {

@Override

public void reduce(Text key, Iterator<IntWritable> values,

OutputCollector<Text, IntWritable> output, Reporter reporter)

throws IOException {

int maxValue = Integer.MIN_VALUE;

while (values.hasNext()) {

maxValue = Math.max(maxValue, values.next().get());

}

output.collect(key, new IntWritable(maxValue));

}

Analyzing the Data with Hadoop | 29

}

public static void main(String[] args) throws IOException {

if (args.length != 2) {

System.err.println("Usage: OldMaxTemperature <input path> <output path>");

System.exit(-1);

}

JobConf conf = new JobConf(OldMaxTemperature.class);

conf.setJobName("Max temperature");

FileInputFormat.addInputPath(conf, new Path(args[0]));

FileOutputFormat.setOutputPath(conf, new Path(args[1]));

conf.setMapperClass(OldMaxTemperatureMapper.class);

conf.setReducerClass(OldMaxTemperatureReducer.class);

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(IntWritable.class);

JobClient.runJob(conf);

}

}

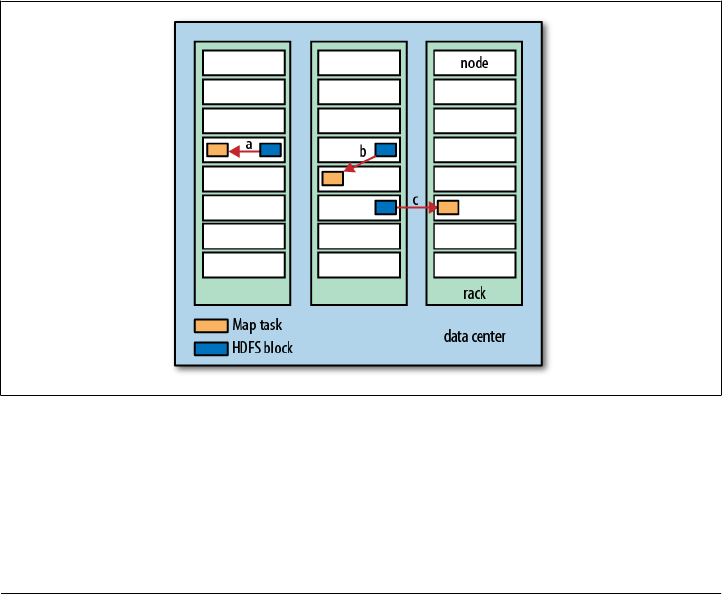

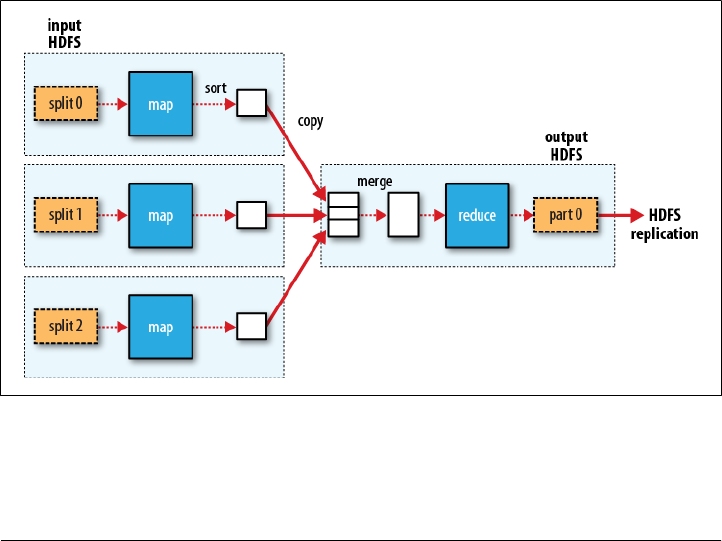

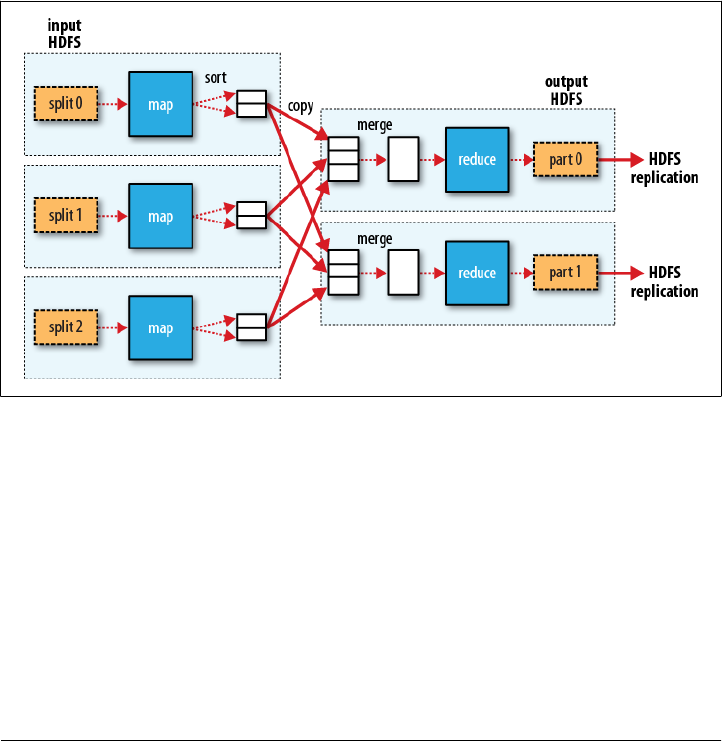

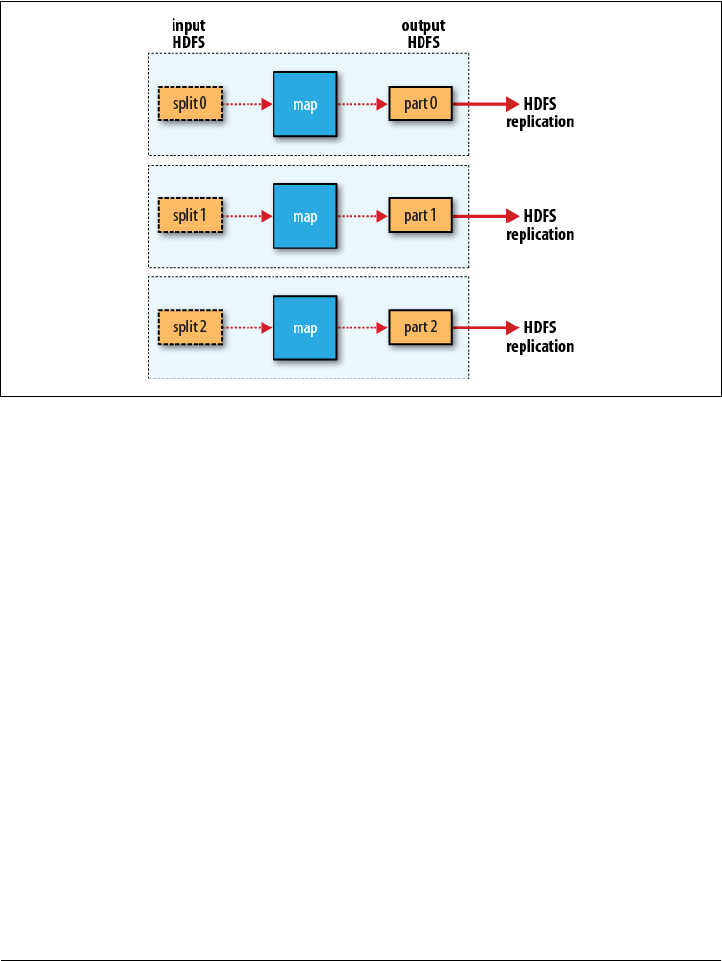

Scaling Out

Data Flow

30 | Chapter 2:MapReduce

Scaling Out | 31

32 | Chapter 2:MapReduce

Combiner Functions

Scaling Out | 33

(1950, 0)

(1950, 20)

(1950, 10)

(1950, 25)

(1950, 15)

(1950, [0, 20, 10, 25, 15])

(1950, 25)

34 | Chapter 2:MapReduce

(1950, [20, 25])

max(0, 20, 10, 25, 15) = max(max(0, 20, 10), max(25, 15)) = max(20, 25) = 25

mean(0, 20, 10, 25, 15) = 14

mean(mean(0, 20, 10), mean(25, 15)) = mean(10, 20) = 15

Specifying a combiner function

Reducer

MaxTemperatureReducer

Job

public class MaxTemperatureWithCombiner {

public static void main(String[] args) throws Exception {

if (args.length != 2) {

System.err.println("Usage: MaxTemperatureWithCombiner <input path> " +

"<output path>");

System.exit(-1);

}

Job job = new Job();

job.setJarByClass(MaxTemperatureWithCombiner.class);

job.setJobName("Max temperature");

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.setMapperClass(MaxTemperatureMapper.class);

job.setCombinerClass(MaxTemperatureReducer.class);

job.setReducerClass(MaxTemperatureReducer.class);

Scaling Out | 35

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

Running a Distributed MapReduce Job

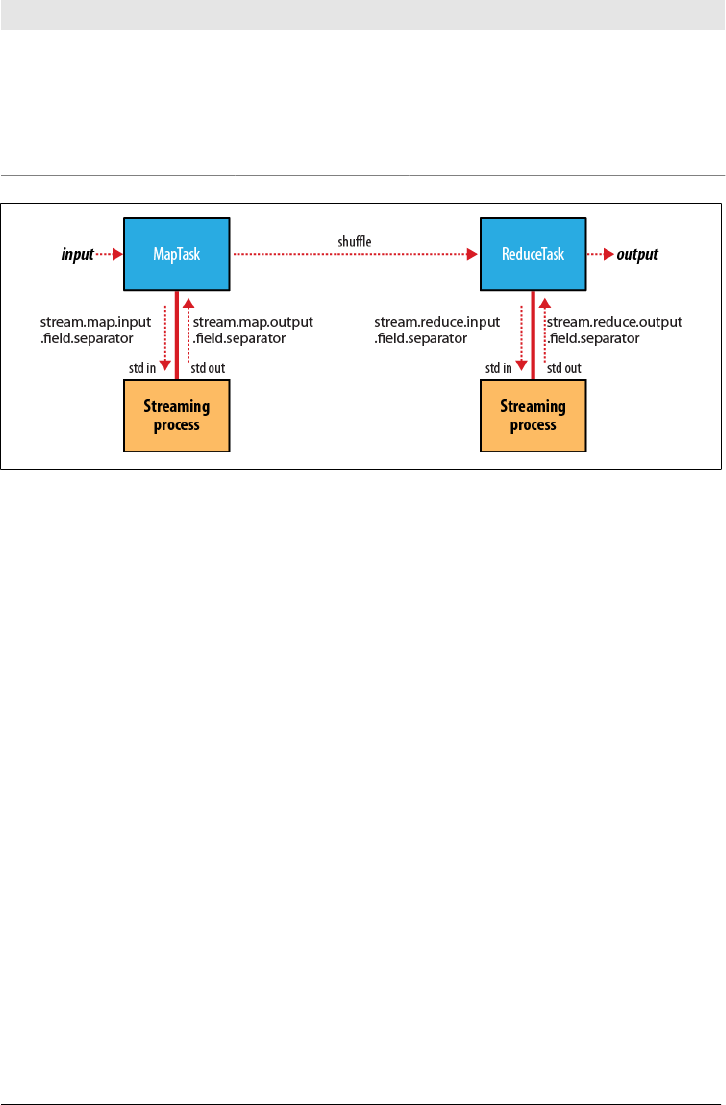

Hadoop Streaming

Ruby

#!/usr/bin/env ruby

36 | Chapter 2:MapReduce

STDIN.each_line do |line|

val = line

year, temp, q = val[15,4], val[87,5], val[92,1]

puts "#{year}\t#{temp}" if (temp != "+9999" && q =~ /[01459]/)

end

STDINIO

\tputs

map()

Mapper

Mapperclose()

% cat input/ncdc/sample.txt | ch02/src/main/ruby/max_temperature_map.rb

1950 +0000

1950 +0022

1950 -0011

1949 +0111

1949 +0078

#!/usr/bin/env ruby

last_key, max_val = nil, -1000000

STDIN.each_line do |line|

key, val = line.split("\t")

if last_key && last_key != key

puts "#{last_key}\t#{max_val}"

last_key, max_val = key, val.to_i

else

last_key, max_val = key, [max_val, val.to_i].max

Hadoop Streaming | 37

end

end

puts "#{last_key}\t#{max_val}" if last_key

last_key && last_key != key

% cat input/ncdc/sample.txt | ch02/src/main/ruby/max_temperature_map.rb | \

sort | ch02/src/main/ruby/max_temperature_reduce.rb

1949 111

1950 22

hadoop

jar

% hadoop jar $HADOOP_INSTALL/contrib/streaming/hadoop-*-streaming.jar \

-input input/ncdc/sample.txt \

-output output \

-mapper ch02/src/main/ruby/max_temperature_map.rb \

-reducer ch02/src/main/ruby/max_temperature_reduce.rb

-combiner

% hadoop jar $HADOOP_INSTALL/contrib/streaming/hadoop-*-streaming.jar \

-input input/ncdc/all \

-output output \

38 | Chapter 2:MapReduce

-mapper "ch02/src/main/ruby/max_temperature_map.rb | sort |

ch02/src/main/ruby/max_temperature_reduce.rb" \

-reducer ch02/src/main/ruby/max_temperature_reduce.rb \

-file ch02/src/main/ruby/max_temperature_map.rb \

-file ch02/src/main/ruby/max_temperature_reduce.rb

-file

Python

#!/usr/bin/env python

import re

import sys

for line in sys.stdin:

val = line.strip()

(year, temp, q) = (val[15:19], val[87:92], val[92:93])

if (temp != "+9999" and re.match("[01459]", q)):

print "%s\t%s" % (year, temp)

#!/usr/bin/env python

import sys

(last_key, max_val) = (None, -sys.maxint)

for line in sys.stdin:

(key, val) = line.strip().split("\t")

if last_key and last_key != key:

print "%s\t%s" % (last_key, max_val)

(last_key, max_val) = (key, int(val))

else:

(last_key, max_val) = (key, max(max_val, int(val)))

if last_key:

print "%s\t%s" % (last_key, max_val)

Hadoop Streaming | 39

% cat input/ncdc/sample.txt | ch02/src/main/python/max_temperature_map.py | \

sort | ch02/src/main/python/max_temperature_reduce.py

1949 111

1950 22

Hadoop Pipes

#include <algorithm>

#include <limits>

#include <stdint.h>

#include <string>

#include "hadoop/Pipes.hh"

#include "hadoop/TemplateFactory.hh"

#include "hadoop/StringUtils.hh"

class MaxTemperatureMapper : public HadoopPipes::Mapper {

public:

MaxTemperatureMapper(HadoopPipes::TaskContext& context) {

}

void map(HadoopPipes::MapContext& context) {

std::string line = context.getInputValue();

std::string year = line.substr(15, 4);

std::string airTemperature = line.substr(87, 5);

std::string q = line.substr(92, 1);

if (airTemperature != "+9999" &&

(q == "0" || q == "1" || q == "4" || q == "5" || q == "9")) {

context.emit(year, airTemperature);

}

}

};

class MapTemperatureReducer : public HadoopPipes::Reducer {

public:

MapTemperatureReducer(HadoopPipes::TaskContext& context) {

}

void reduce(HadoopPipes::ReduceContext& context) {

int maxValue = INT_MIN;

while (context.nextValue()) {

40 | Chapter 2:MapReduce

maxValue = std::max(maxValue, HadoopUtils::toInt(context.getInputValue()));

}

context.emit(context.getInputKey(), HadoopUtils::toString(maxValue));

}

};

int main(int argc, char *argv[]) {

return HadoopPipes::runTask(HadoopPipes::TemplateFactory<MaxTemperatureMapper,

MapTemperatureReducer>());

}

MapperReducerHadoopPipes

map()reduce()

MapContext ReduceContext

JobConf

MapTempera

tureReducer

HadoopUtils

MaxTemperature

MapperairTemperature

map()

main()HadoopPipes::runTask

Mapper

ReducerrunTask()Factory

MapperReducer

Compiling and Running

CC = g++

CPPFLAGS = -m32 -I$(HADOOP_INSTALL)/c++/$(PLATFORM)/include

max_temperature: max_temperature.cpp

$(CC) $(CPPFLAGS) $< -Wall -L$(HADOOP_INSTALL)/c++/$(PLATFORM)/lib -lhadooppipes \

-lhadooputils -lpthread -g -O2 -o $@

Hadoop Pipes | 41

HADOOP_INSTALL

PLATFORM

% export PLATFORM=Linux-i386-32

% make

max_temperature

% hadoop fs -put max_temperature bin/max_temperature

% hadoop fs -put input/ncdc/sample.txt sample.txt

pipes

-program

% hadoop pipes \

-D hadoop.pipes.java.recordreader=true \

-D hadoop.pipes.java.recordwriter=true \

-input sample.txt \

-output output \

-program bin/max_temperature

-Dhadoop.pipes.java.recordreader

hadoop.pipes.java.recordwritertrue

42 | Chapter 2:MapReduce

CHAPTER 3

The Hadoop Distributed Filesystem

The Design of HDFS

43

44 | Chapter 3:The Hadoop Distributed Filesystem

HDFS Concepts

Blocks

Why Is a Block in HDFS So Large?

HDFS Concepts | 45

fsck

% hadoop fsck / -files -blocks

Namenodes and Datanodes

46 | Chapter 3:The Hadoop Distributed Filesystem

HDFS Federation

ViewFileSystem

HDFS Concepts | 47

HDFS High-Availability

48 | Chapter 3:The Hadoop Distributed Filesystem

Failover and fencing

The Command-Line Interface

The Command-Line Interface | 49

fs.default.name

hdfs

localhost

dfs.replication

Basic Filesystem Operations

hadoop fs -help

% hadoop fs -copyFromLocal input/docs/quangle.txt hdfs://localhost/user/tom/

quangle.txt

fs

-copyFromLocal

hdfs://localhost

% hadoop fs -copyFromLocal input/docs/quangle.txt /user/tom/quangle.txt

% hadoop fs -copyFromLocal input/docs/quangle.txt quangle.txt

% hadoop fs -copyToLocal quangle.txt quangle.copy.txt

% md5 input/docs/quangle.txt quangle.copy.txt

MD5 (input/docs/quangle.txt) = a16f231da6b05e2ba7a339320e7dacd9

MD5 (quangle.copy.txt) = a16f231da6b05e2ba7a339320e7dacd9

50 | Chapter 3:The Hadoop Distributed Filesystem

% hadoop fs -mkdir books

% hadoop fs -ls .

Found 2 items

drwxr-xr-x - tom supergroup 0 2009-04-02 22:41 /user/tom/books

-rw-r--r-- 1 tom supergroup 118 2009-04-02 22:29 /user/tom/quangle.txt

ls -l

File Permissions in HDFS

rw

x

dfs.permissions

The Command-Line Interface | 51

Hadoop Filesystems

org.apache.hadoop.fs.FileSystem

Filesystem URI scheme Java implementation

(all under org.apache.hadoop)

Description

Local file fs.LocalFileSystem A filesystem for a locally connected disk with client-

side checksums. Use RawLocalFileSystem for a

local filesystem with no checksums. See “LocalFileSys-

tem” on page 82.

HDFS hdfs hdfs.

DistributedFileSystem

Hadoop’s distributed filesystem. HDFS is designed to work

efficiently in conjunction with MapReduce.

HFTP hftp hdfs.HftpFileSystem A filesystem providing read-only access to HDFS over

HTTP. (Despite its name, HFTP has no connection with

FTP.) Often used with distcp (see “Parallel Copying with

distcp” on page 75) to copy data between HDFS

clusters running different versions.

HSFTP hsftp hdfs.HsftpFileSystem A filesystem providing read-only access to HDFS over

HTTPS. (Again, this has no connection with FTP.)

WebHDFS webhdfs hdfs.web.WebHdfsFile

System

A filesystem providing secure read-write access to HDFS

over HTTP. WebHDFS is intended as a replacement for

HFTP and HSFTP.

HAR har fs.HarFileSystem A filesystem layered on another filesystem for archiving

files. Hadoop Archives are typically used for archiving files

in HDFS to reduce the namenode’s memory usage. See

“Hadoop Archives” on page 77.

KFS (Cloud-

Store)

kfs fs.kfs.

KosmosFileSystem

CloudStore (formerly Kosmos filesystem) is a dis-

tributed filesystem like HDFS or Google’s GFS, written in

C++. Find more information about it at

http://code.google.com/p/kosmosfs/.

FTP ftp fs.ftp.FTPFileSystem A filesystem backed by an FTP server.

S3 (native) s3n fs.s3native.

NativeS3FileSystem

A filesystem backed by Amazon S3. See http://wiki

.apache.org/hadoop/AmazonS3.

52 | Chapter 3:The Hadoop Distributed Filesystem

Filesystem URI scheme Java implementation

(all under org.apache.hadoop)

Description

S3 (block-

based)

s3 fs.s3.S3FileSystem A filesystem backed by Amazon S3, which stores files in

blocks (much like HDFS) to overcome S3’s 5 GB file size

limit.

Distributed

RAID

hdfs hdfs.DistributedRaidFi

leSystem

A “RAID” version of HDFS designed for archival storage.

For each file in HDFS, a (smaller) parity file is created,

which allows the HDFS replication to be reduced from

three to two, which reduces disk usage by 25% to 30%

while keeping the probability of data loss the same. Dis-

tributed RAID requires that you run a RaidNode daemon

on the cluster.

View viewfs viewfs.ViewFileSystem A client-side mount table for other Hadoop filesystems.

Commonly used to create mount points for federated

namenodes (see “HDFS Federation” on page 47).

% hadoop fs -ls file:///

Interfaces

FileSystem

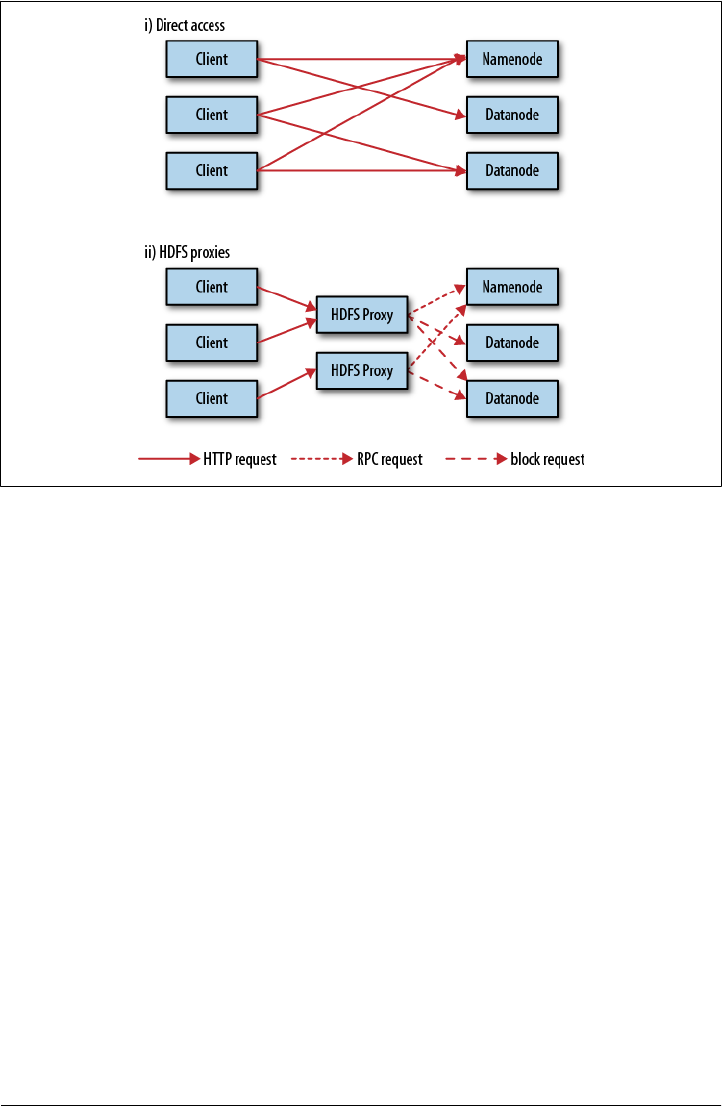

HTTP

DistributedFileSystem

Hadoop Filesystems | 53

dfs.webhdfs.enabled

FileSystem

54 | Chapter 3:The Hadoop Distributed Filesystem

C

FileSystem

FUSE

lscat

The Java Interface

FileSystem

DistributedFileSystem

FileSystem

Reading Data from a Hadoop URL

java.net.URL

InputStream in = null;

try {

FileContext

FileContext

The Java Interface | 55

in = new URL("hdfs://host/path").openStream();

// process in

} finally {

IOUtils.closeStream(in);

}

hdfs

setURLStreamHandlerFactoryURL

FsUrlStreamHandlerFactory

URLStreamHandlerFactory

cat

public class URLCat {

static {

URL.setURLStreamHandlerFactory(new FsUrlStreamHandlerFactory());

}

public static void main(String[] args) throws Exception {

InputStream in = null;

try {

in = new URL(args[0]).openStream();

IOUtils.copyBytes(in, System.out, 4096, false);

} finally {

IOUtils.closeStream(in);

}

}

}

IOUtils

finally

System.out copyBytes

System.out

56 | Chapter 3:The Hadoop Distributed Filesystem

% hadoop URLCat hdfs://localhost/user/tom/quangle.txt

On the top of the Crumpetty Tree

The Quangle Wangle sat,

But his face you could not see,

On account of his Beaver Hat.

Reading Data Using the FileSystem API

URLStreamHand

lerFactoryFileSystem

Path

java.io.File

Path

FileSystem

FileSystem

public static FileSystem get(Configuration conf) throws IOException

public static FileSystem get(URI uri, Configuration conf) throws IOException

public static FileSystem get(URI uri, Configuration conf, String user)

throws IOException

Configuration

URI

URI

getLocal()

public static LocalFileSystem getLocal(Configuration conf) throws IOException

FileSystemopen()

public FSDataInputStream open(Path f) throws IOException

public abstract FSDataInputStream open(Path f, int bufferSize) throws IOException

The Java Interface | 57

public class FileSystemCat {

public static void main(String[] args) throws Exception {

String uri = args[0];

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(uri), conf);

InputStream in = null;

try {

in = fs.open(new Path(uri));

IOUtils.copyBytes(in, System.out, 4096, false);

} finally {

IOUtils.closeStream(in);

}

}

}

% hadoop FileSystemCat hdfs://localhost/user/tom/quangle.txt

On the top of the Crumpetty Tree

The Quangle Wangle sat,

But his face you could not see,

On account of his Beaver Hat.

FSDataInputStream

open()FileSystemFSDataInputStream

java.iojava.io.DataInputStream

package org.apache.hadoop.fs;

public class FSDataInputStream extends DataInputStream

implements Seekable, PositionedReadable {

// implementation elided

}

Seekable

getPos()

public interface Seekable {

void seek(long pos) throws IOException;

long getPos() throws IOException;

}

seek()

IOExceptionskip()java.io.InputStream

seek()

58 | Chapter 3:The Hadoop Distributed Filesystem

public class FileSystemDoubleCat {

public static void main(String[] args) throws Exception {

String uri = args[0];

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(uri), conf);

FSDataInputStream in = null;

try {

in = fs.open(new Path(uri));

IOUtils.copyBytes(in, System.out, 4096, false);

in.seek(0); // go back to the start of the file

IOUtils.copyBytes(in, System.out, 4096, false);

} finally {

IOUtils.closeStream(in);

}

}

}

% hadoop FileSystemDoubleCat hdfs://localhost/user/tom/quangle.txt

On the top of the Crumpetty Tree

The Quangle Wangle sat,

But his face you could not see,

On account of his Beaver Hat.

On the top of the Crumpetty Tree

The Quangle Wangle sat,

But his face you could not see,

On account of his Beaver Hat.

FSDataInputStreamPositionedReadable

public interface PositionedReadable {

public int read(long position, byte[] buffer, int offset, int length)

throws IOException;

public void readFully(long position, byte[] buffer, int offset, int length)

throws IOException;

public void readFully(long position, byte[] buffer) throws IOException;

}

read()lengthposition

bufferoffset

lengthreadFully()

lengthbuffer.length

The Java Interface | 59

buffer

EOFException

FSDataInputStream

seek()

Writing Data

FileSystem

Path

public FSDataOutputStream create(Path f) throws IOException

create()

exists()

Progressable

package org.apache.hadoop.util;

public interface Progressable {

public void progress();

}

append()

public FSDataOutputStream append(Path f) throws IOException

60 | Chapter 3:The Hadoop Distributed Filesystem

progress()

public class FileCopyWithProgress {

public static void main(String[] args) throws Exception {

String localSrc = args[0];

String dst = args[1];

InputStream in = new BufferedInputStream(new FileInputStream(localSrc));

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(dst), conf);

OutputStream out = fs.create(new Path(dst), new Progressable() {

public void progress() {

System.out.print(".");

}

});

IOUtils.copyBytes(in, out, 4096, true);

}

}

% hadoop FileCopyWithProgress input/docs/1400-8.txt hdfs://localhost/user/tom/

1400-8.txt

...............

progress()

FSDataOutputStream

create() FileSystem FSDataOutputStream

FSDataInputStream

package org.apache.hadoop.fs;

public class FSDataOutputStream extends DataOutputStream implements Syncable {

public long getPos() throws IOException {

The Java Interface | 61

// implementation elided

}

// implementation elided

}

FSDataInputStreamFSDataOutputStream

Directories

FileSystem

public boolean mkdirs(Path f) throws IOException

java.io.Filemkdirs()true

create()

Querying the Filesystem

File metadata: FileStatus

FileStatus

getFileStatus()FileSystem FileStatus

public class ShowFileStatusTest {

private MiniDFSCluster cluster; // use an in-process HDFS cluster for testing

private FileSystem fs;

@Before

public void setUp() throws IOException {

Configuration conf = new Configuration();

if (System.getProperty("test.build.data") == null) {

System.setProperty("test.build.data", "/tmp");

}

cluster = new MiniDFSCluster(conf, 1, true, null);

fs = cluster.getFileSystem();

62 | Chapter 3:The Hadoop Distributed Filesystem

OutputStream out = fs.create(new Path("/dir/file"));

out.write("content".getBytes("UTF-8"));

out.close();

}

@After

public void tearDown() throws IOException {

if (fs != null) { fs.close(); }

if (cluster != null) { cluster.shutdown(); }

}

@Test(expected = FileNotFoundException.class)

public void throwsFileNotFoundForNonExistentFile() throws IOException {

fs.getFileStatus(new Path("no-such-file"));

}

@Test

public void fileStatusForFile() throws IOException {

Path file = new Path("/dir/file");

FileStatus stat = fs.getFileStatus(file);

assertThat(stat.getPath().toUri().getPath(), is("/dir/file"));

assertThat(stat.isDir(), is(false));

assertThat(stat.getLen(), is(7L));

assertThat(stat.getModificationTime(),

is(lessThanOrEqualTo(System.currentTimeMillis())));

assertThat(stat.getReplication(), is((short) 1));

assertThat(stat.getBlockSize(), is(64 * 1024 * 1024L));

assertThat(stat.getOwner(), is("tom"));

assertThat(stat.getGroup(), is("supergroup"));

assertThat(stat.getPermission().toString(), is("rw-r--r--"));

}

@Test

public void fileStatusForDirectory() throws IOException {

Path dir = new Path("/dir");

FileStatus stat = fs.getFileStatus(dir);

assertThat(stat.getPath().toUri().getPath(), is("/dir"));

assertThat(stat.isDir(), is(true));

assertThat(stat.getLen(), is(0L));

assertThat(stat.getModificationTime(),

is(lessThanOrEqualTo(System.currentTimeMillis())));

assertThat(stat.getReplication(), is((short) 0));

assertThat(stat.getBlockSize(), is(0L));

assertThat(stat.getOwner(), is("tom"));

assertThat(stat.getGroup(), is("supergroup"));

assertThat(stat.getPermission().toString(), is("rwxr-xr-x"));

}

}

FileNotFoundException

exists()FileSys

tem

public boolean exists(Path f) throws IOException

The Java Interface | 63

Listing files

FileSystemlistStatus()

public FileStatus[] listStatus(Path f) throws IOException

public FileStatus[] listStatus(Path f, PathFilter filter) throws IOException

public FileStatus[] listStatus(Path[] files) throws IOException

public FileStatus[] listStatus(Path[] files, PathFilter filter) throws IOException

FileStatus

FileStatus

PathFilter

listStatusFileSta

tus

stat2Paths()FileUtil

FileStatusPath

public class ListStatus {

public static void main(String[] args) throws Exception {

String uri = args[0];

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(uri), conf);

Path[] paths = new Path[args.length];

for (int i = 0; i < paths.length; i++) {

paths[i] = new Path(args[i]);

}

FileStatus[] status = fs.listStatus(paths);

Path[] listedPaths = FileUtil.stat2Paths(status);

for (Path p : listedPaths) {

System.out.println(p);

}

}

}

% hadoop ListStatus hdfs://localhost/ hdfs://localhost/user/tom

hdfs://localhost/user

hdfs://localhost/user/tom/books

hdfs://localhost/user/tom/quangle.txt

64 | Chapter 3:The Hadoop Distributed Filesystem

File patterns

FileSystem

public FileStatus[] globStatus(Path pathPattern) throws IOException

public FileStatus[] globStatus(Path pathPattern, PathFilter filter) throws

IOException

globStatus()FileStatus

PathFilter

Glob Name Matches

*asterisk Matches zero or more characters

?question mark Matches a single character

[ab] character class Matches a single character in the set {a, b}

[^ab] negated character class Matches a single character that is not in the set {a, b}

[a-b] character range Matches a single character in the (closed) range [a, b], where a is lexicographically

less than or equal to b

[^a-b] negated character range Matches a single character that is not in the (closed) range [a, b], where a is

lexicographically less than or equal to b

{a,b} alternation Matches either expression a or b

\c escaped character Matches character c when it is a metacharacter

/

2007/

12/

30/

31/

2008/

01/

01/

02/

The Java Interface | 65

Glob Expansion

/* /2007 /2008

/*/* /2007/12 /2008/01

/*/12/* /2007/12/30 /2007/12/31

/200? /2007 /2008

/200[78] /2007 /2008

/200[7-8] /2007 /2008

/200[^01234569] /2007 /2008

/*/*/{31,01} /2007/12/31 /2008/01/01

/*/*/3{0,1} /2007/12/30 /2007/12/31

/*/{12/31,01/01} /2007/12/31 /2008/01/01

PathFilter

listStatus()globStatus()FileSystem

PathFilter

package org.apache.hadoop.fs;

public interface PathFilter {

boolean accept(Path path);

}

PathFilterjava.io.FileFilterPathFile

PathFilter

public class RegexExcludePathFilter implements PathFilter {

private final String regex;

public RegexExcludePathFilter(String regex) {

this.regex = regex;

}

public boolean accept(Path path) {

return !path.toString().matches(regex);

}

}

66 | Chapter 3:The Hadoop Distributed Filesystem

fs.globStatus(new Path("/2007/*/*"), new RegexExcludeFilter("^.*/2007/12/31$"))

Path

PathFilter

Deleting Data

delete()FileSystem

public boolean delete(Path f, boolean recursive) throws IOException

frecursive

recursive true

IOException

Data Flow

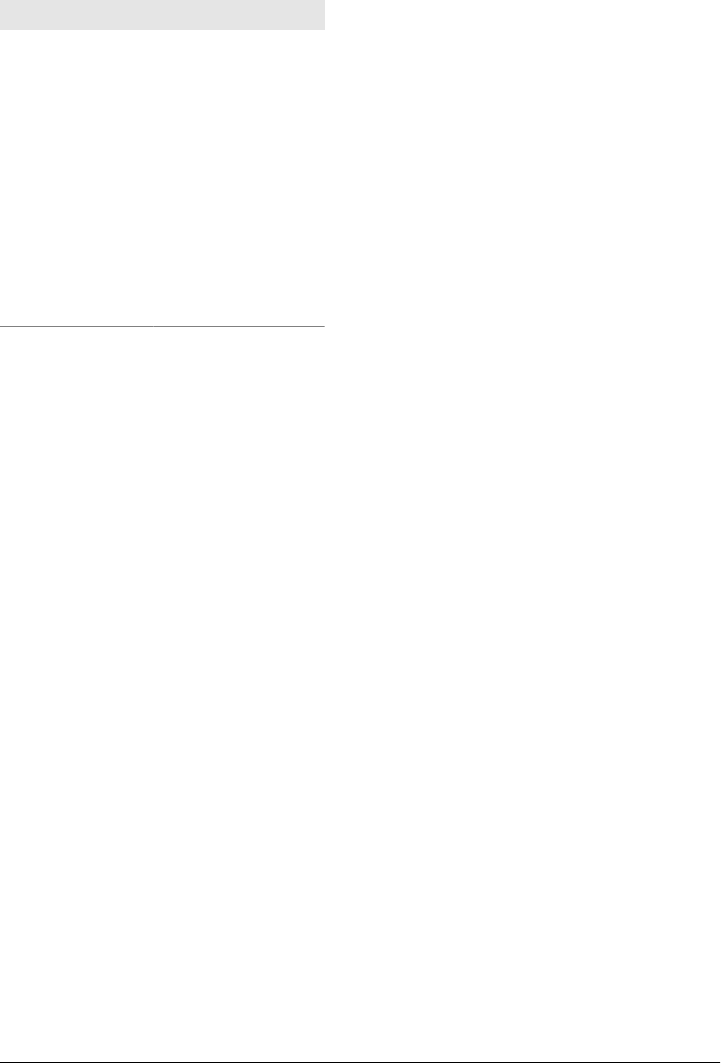

Anatomy of a File Read

open()FileSystem

DistributedFileSystem

DistributedFileSystem

Data Flow | 67

DistributedFileSystemFSDataInputStream

FSDataInputStream

DFSInputStream

read()DFSInputStream

read()

DFSInputStream

DFSInputStream

close()FSDataInputStream

DFSInputStream

DFSInputStream

DFSInput

Stream

68 | Chapter 3:The Hadoop Distributed Filesystem

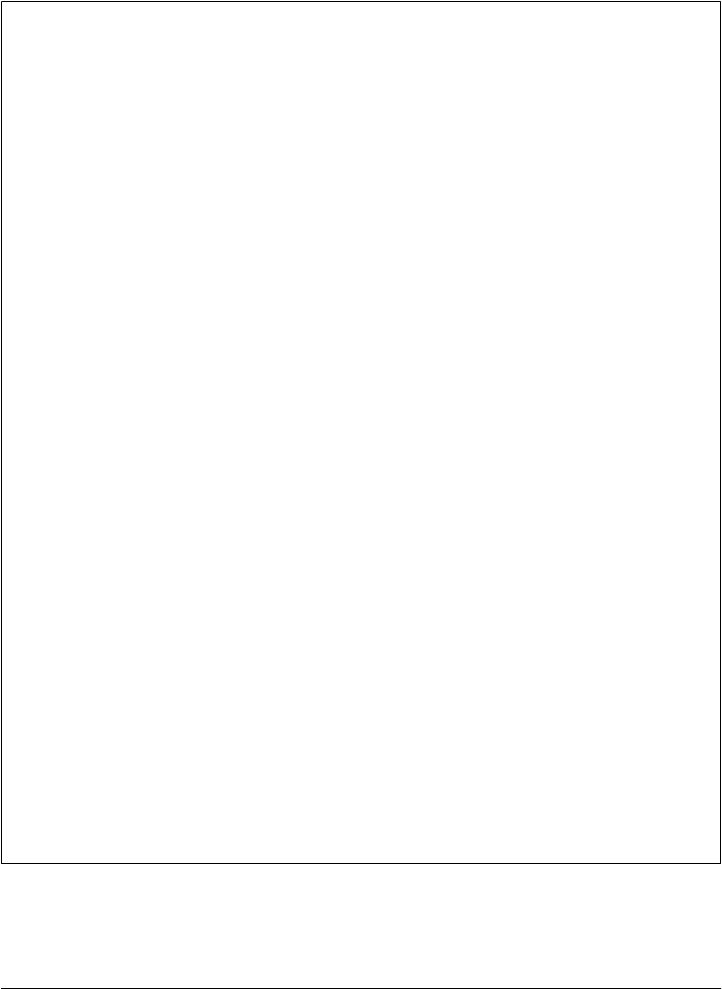

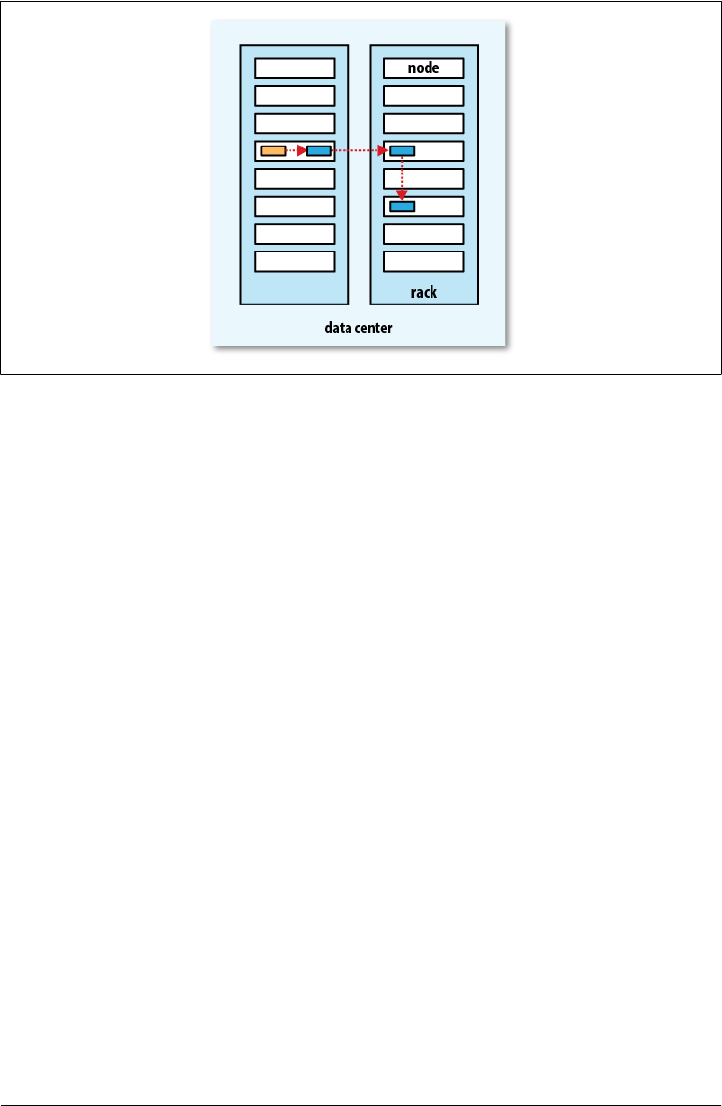

Network Topology and Hadoop

Data Flow | 69

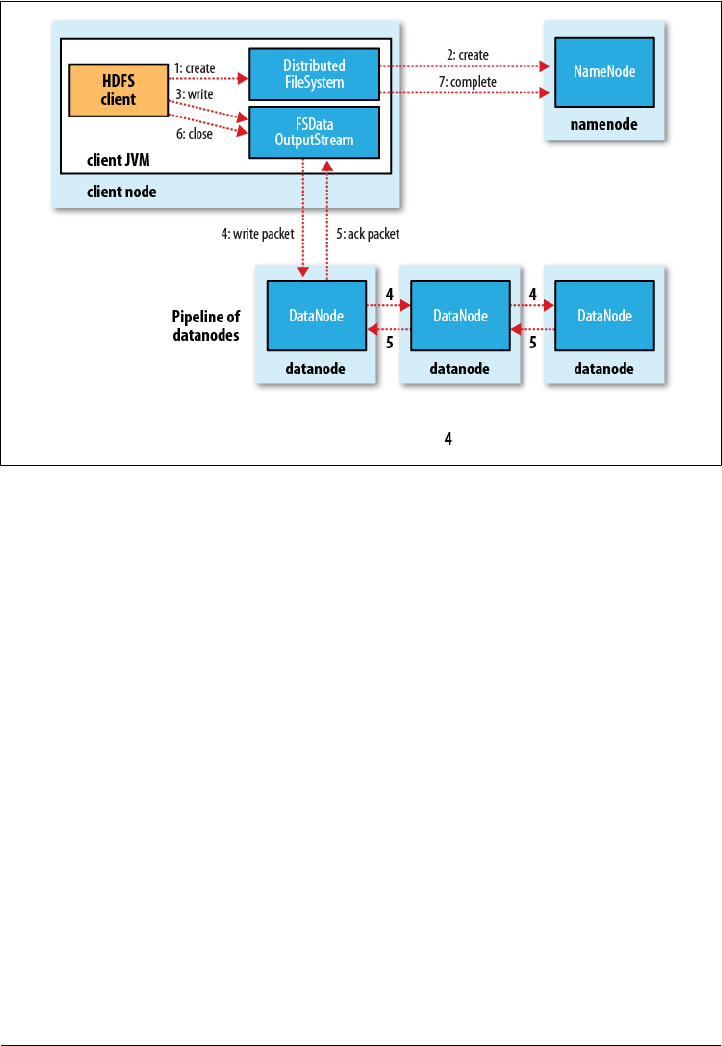

Anatomy of a File Write

create() DistributedFileSystem

DistributedFileSystem

IOExceptionDistributedFileSystemFSDataOutputStream

FSDataOutputStreamDFSOutput

Stream

DFSOutputStream

Data

Streamer

DataStreamer

70 | Chapter 3:The Hadoop Distributed Filesystem

DFSOutputStream

dfs.replication.min

dfs.replication

close()

Data Flow | 71

Data

Streamer

Replica Placement

Coherency Model

Path p = new Path("p");

fs.create(p);

assertThat(fs.exists(p), is(true));

72 | Chapter 3:The Hadoop Distributed Filesystem

Path p = new Path("p");

OutputStream out = fs.create(p);

out.write("content".getBytes("UTF-8"));

out.flush();

assertThat(fs.getFileStatus(p).getLen(), is(0L));

sync()FSDataOutputStreamsync()

Path p = new Path("p");

FSDataOutputStream out = fs.create(p);

out.write("content".getBytes("UTF-8"));

out.flush();

out.sync();

assertThat(fs.getFileStatus(p).getLen(), is(((long) "content".length())));

sync()hflush()

hsync()

fsync

hflush()

Data Flow | 73

fsync

FileOutputStream out = new FileOutputStream(localFile);

out.write("content".getBytes("UTF-8"));

out.flush(); // flush to operating system

out.getFD().sync(); // sync to disk

assertThat(localFile.length(), is(((long) "content".length())));

sync()

Path p = new Path("p");

OutputStream out = fs.create(p);

out.write("content".getBytes("UTF-8"));

out.close();

assertThat(fs.getFileStatus(p).getLen(), is(((long) "content".length())));

Consequences for application design

sync()

sync()

sync()

sync()

Data Ingest with Flume and Sqoop

tail

tail

74 | Chapter 3:The Hadoop Distributed Filesystem

Parallel Copying with distcp

% hadoop distcp hdfs://namenode1/foo hdfs://namenode2/bar

-overwrite

-update

-overwrite-update

% hadoop distcp -update hdfs://namenode1/foo hdfs://namenode2/bar/foo

-overwrite-update

Parallel Copying with distcp | 75

-m-m 1000

% hadoop distcp hftp://namenode1:50070/foo hdfs://namenode2/bar

dfs.http.address

% hadoop distcp webhdfs://namenode1:50070/foo webhdfs://namenode2:50070/bar

Keeping an HDFS Cluster Balanced

-m

1

76 | Chapter 3:The Hadoop Distributed Filesystem

Hadoop Archives

Using Hadoop Archives

% hadoop fs -lsr /my/files

-rw-r--r-- 1 tom supergroup 1 2009-04-09 19:13 /my/files/a

drwxr-xr-x - tom supergroup 0 2009-04-09 19:13 /my/files/dir

-rw-r--r-- 1 tom supergroup 1 2009-04-09 19:13 /my/files/dir/b

archive

% hadoop archive -archiveName files.har /my/files /my

% hadoop fs -ls /my

Found 2 items

drwxr-xr-x - tom supergroup 0 2009-04-09 19:13 /my/files

Hadoop Archives | 77

drwxr-xr-x - tom supergroup 0 2009-04-09 19:13 /my/files.har

% hadoop fs -ls /my/files.har

Found 3 items

-rw-r--r-- 10 tom supergroup 165 2009-04-09 19:13 /my/files.har/_index

-rw-r--r-- 10 tom supergroup 23 2009-04-09 19:13 /my/files.har/_masterindex

-rw-r--r-- 1 tom supergroup 2 2009-04-09 19:13 /my/files.har/part-0

% hadoop fs -lsr har:///my/files.har

drw-r--r-- - tom supergroup 0 2009-04-09 19:13 /my/files.har/my

drw-r--r-- - tom supergroup 0 2009-04-09 19:13 /my/files.har/my/files

-rw-r--r-- 10 tom supergroup 1 2009-04-09 19:13 /my/files.har/my/files/a

drw-r--r-- - tom supergroup 0 2009-04-09 19:13 /my/files.har/my/files/dir

-rw-r--r-- 10 tom supergroup 1 2009-04-09 19:13 /my/files.har/my/files/dir/b

% hadoop fs -lsr har:///my/files.har/my/files/dir

% hadoop fs -lsr har://hdfs-localhost:8020/my/files.har/my/files/dir

% hadoop fs -rmr /my/files.har

78 | Chapter 3:The Hadoop Distributed Filesystem

Limitations

InputFormat

Hadoop Archives | 79

CHAPTER 4

Hadoop I/O

Data Integrity

Data Integrity in HDFS

io.bytes.per.checksum

81

ChecksumException IOExcep

tion

DataBlockScanner

ChecksumException

falsesetVerify

Checksum()FileSystemopen()

-ignoreCrc-get

-copyToLocal

LocalFileSystem

LocalFileSystem

io.bytes.per.checksum

82 | Chapter 4:Hadoop I/O

LocalFileSystemChecksumException

RawLocalFileSystem Local

FileSystem

fs.file.implorg.apache.

hadoop.fs.RawLocalFileSystemRawLocalFile

System

Configuration conf = ...

FileSystem fs = new RawLocalFileSystem();

fs.initialize(null, conf);

ChecksumFileSystem

LocalFileSystemChecksumFileSystem

Checksum

FileSystemFileSystem

FileSystem rawFs = ...

FileSystem checksummedFs = new ChecksumFileSystem(rawFs);

getRawFileSystem() ChecksumFileSystem ChecksumFileSystem

getChecksumFile()

ChecksumFileSystem

reportChecksumFailure()

LocalFileSystem

Compression

Compression | 83

Compression format Tool Algorithm Filename extension Splittable?

DEFLATEaN/A DEFLATE .deflate No

gzip gzip DEFLATE .gz No

bzip2 bzip2 bzip2 .bz2 Yes

LZO lzop LZO .lzo Nob

LZ4 N/A LZ4 .lz4 No

Snappy N/A Snappy .snappy No

aDEFLATE is a compression algorithm whose standard implementation is zlib. There is no commonly available command-line tool for

producing files in DEFLATE format, as gzip is normally used. (Note that the gzip file format is DEFLATE with extra headers and a footer.)

The .deflate filename extension is a Hadoop convention.

bHowever, LZO files are splittable if they have been indexed in a preprocessing step. See page 89.

–1-9

gzip -1 file

84 | Chapter 4:Hadoop I/O

Codecs

CompressionCodec

GzipCodec

Compression format Hadoop CompressionCodec

DEFLATE org.apache.hadoop.io.compress.DefaultCodec

gzip org.apache.hadoop.io.compress.GzipCodec

bzip2 org.apache.hadoop.io.compress.BZip2Codec

LZO com.hadoop.compression.lzo.LzopCodec

LZ4 org.apache.hadoop.io.compress.Lz4Codec

Snappy org.apache.hadoop.io.compress.SnappyCodec

LzopCodeclzop

LzoCodec

Compressing and decompressing streams with CompressionCodec

CompressionCodec

createOutput

Stream(OutputStream out)CompressionOutputStream

createInputStream(InputStream in)CompressionInputStream

CompressionOutputStream CompressionInputStream java.util.

zip.DeflaterOutputStreamjava.util.zip.DeflaterInputStream

SequenceFile

Compression | 85

public class StreamCompressor {

public static void main(String[] args) throws Exception {

String codecClassname = args[0];

Class<?> codecClass = Class.forName(codecClassname);

Configuration conf = new Configuration();

CompressionCodec codec = (CompressionCodec)

ReflectionUtils.newInstance(codecClass, conf);

CompressionOutputStream out = codec.createOutputStream(System.out);

IOUtils.copyBytes(System.in, out, 4096, false);

out.finish();

}

}

CompressionCodec

ReflectionUtils

System.out

copyBytes()IOUtils

CompressionOutputStream finish()

CompressionOutputStream

StreamCompressor

GzipCodec

% echo "Text" | hadoop StreamCompressor org.apache.hadoop.io.compress.GzipCodec \

| gunzip -

Text

Inferring CompressionCodecs using CompressionCodecFactory

GzipCodec

CompressionCodecFactory

CompressionCodecgetCodec()Path

public class FileDecompressor {

public static void main(String[] args) throws Exception {

String uri = args[0];

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(uri), conf);

Path inputPath = new Path(uri);

CompressionCodecFactory factory = new CompressionCodecFactory(conf);

86 | Chapter 4:Hadoop I/O

CompressionCodec codec = factory.getCodec(inputPath);

if (codec == null) {

System.err.println("No codec found for " + uri);

System.exit(1);

}

String outputUri =

CompressionCodecFactory.removeSuffix(uri, codec.getDefaultExtension());

InputStream in = null;

OutputStream out = null;

try {

in = codec.createInputStream(fs.open(inputPath));

out = fs.create(new Path(outputUri));

IOUtils.copyBytes(in, out, conf);

} finally {

IOUtils.closeStream(in);

IOUtils.closeStream(out);

}

}

}

removeSuffix()CompressionCodecFactory

% hadoop FileDecompressor file.gz

CompressionCodecFactory io.compression.

codecs

CompressionCodecFactory

Property name Type Default value Description

io.compression.codecs Comma-separated

Class names org.apache.hadoop.io.

compress.DefaultCodec,

org.apache.hadoop.io.

compress.GzipCodec,

org.apache.hadoop.io.

compress.BZip2Codec

A list of the

CompressionCodec classes

for compression/

decompression

Native libraries

Compression | 87

Compression format Java implementation? Native implementation?

DEFLATE Yes Yes

gzip Yes Yes

bzip2 Yes No

LZO No Yes

LZ4 No Yes

Snappy No Yes

java.library.path

hadoop.native.lib

false

CodecPool

Compressor

public class PooledStreamCompressor {

public static void main(String[] args) throws Exception {

String codecClassname = args[0];

Class<?> codecClass = Class.forName(codecClassname);

Configuration conf = new Configuration();

CompressionCodec codec = (CompressionCodec)

CodecPool.

88 | Chapter 4:Hadoop I/O

ReflectionUtils.newInstance(codecClass, conf);

Compressor compressor = null;

try {

compressor = CodecPool.getCompressor(codec);

CompressionOutputStream out =

codec.createOutputStream(System.out, compressor);

IOUtils.copyBytes(System.in, out, 4096, false);

out.finish();

} finally {

CodecPool.returnCompressor(compressor);

}

}

}

CompressorCompressionCodec

createOutputStream()finally

IOException

Compression and Input Splits

Compression | 89

Which Compression Format Should I Use?

Using Compression in MapReduce

mapred.output.compress true mapred.output.compression.codec

FileOutputFormat

public class MaxTemperatureWithCompression {

public static void main(String[] args) throws Exception {

90 | Chapter 4:Hadoop I/O

if (args.length != 2) {

System.err.println("Usage: MaxTemperatureWithCompression <input path> " +

"<output path>");

System.exit(-1);

}

Job job = new Job();

job.setJarByClass(MaxTemperature.class);

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileOutputFormat.setCompressOutput(job, true);

FileOutputFormat.setOutputCompressorClass(job, GzipCodec.class);

job.setMapperClass(MaxTemperatureMapper.class);

job.setCombinerClass(MaxTemperatureReducer.class);

job.setReducerClass(MaxTemperatureReducer.class);

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

% hadoop MaxTemperatureWithCompression input/ncdc/sample.txt.gz output

% gunzip -c output/part-r-00000.gz

1949 111

1950 22

mapred.output.com

pression.type

RECORD BLOCK

SequenceFileOutputFormatsetOut

putCompressionType()

Tool

Compression | 91

Property name Type Default value Description

mapred.output.com

press

boolean false Compress outputs

mapred.output.com

pression.

codec

Class name org.apache.hadoop.io.

compress.DefaultCodec

The compression codec to use for out-

puts

mapred.output.com

pression.

type

String RECORD The type of compression to use for Se-

quenceFile outputs: NONE, RECORD, or

BLOCK

Compressing map output

Property name Type Default value Description

mapred.compress.map. output boolean false Compress map outputs

mapred.map.output.

compression.codec

Class org.apache.hadoop.io.

compress.DefaultCodec

The compression codec to use for

map outputs

Configuration conf = new Configuration();

conf.setBoolean("mapred.compress.map.output", true);

conf.setClass("mapred.map.output.compression.codec", GzipCodec.class,

CompressionCodec.class);

Job job = new Job(conf);

JobConf

conf.setCompressMapOutput(true);

conf.setMapOutputCompressorClass(GzipCodec.class);

92 | Chapter 4:Hadoop I/O

Serialization

Serialization | 93

The Writable Interface

DataOutput

DataInput

package org.apache.hadoop.io;

import java.io.DataOutput;

import java.io.DataInput;

import java.io.IOException;

public interface Writable {

void write(DataOutput out) throws IOException;

void readFields(DataInput in) throws IOException;

}

Writable

IntWritableint

set()

IntWritable writable = new IntWritable();

writable.set(163);

IntWritable writable = new IntWritable(163);

IntWritable

java.io.ByteArrayOutputStreamjava.io.DataOutputStream

java.io.DataOutput

public static byte[] serialize(Writable writable) throws IOException {

ByteArrayOutputStream out = new ByteArrayOutputStream();

DataOutputStream dataOut = new DataOutputStream(out);

writable.write(dataOut);

dataOut.close();

return out.toByteArray();

}

byte[] bytes = serialize(writable);

assertThat(bytes.length, is(4));

java.io.DataOutput

StringUtils

assertThat(StringUtils.byteToHexString(bytes), is("000000a3"));

94 | Chapter 4:Hadoop I/O

Writable

public static byte[] deserialize(Writable writable, byte[] bytes)

throws IOException {

ByteArrayInputStream in = new ByteArrayInputStream(bytes);

DataInputStream dataIn = new DataInputStream(in);

writable.readFields(dataIn);

dataIn.close();

return bytes;

}

IntWritabledeserialize()

get()

IntWritable newWritable = new IntWritable();

deserialize(newWritable, bytes);

assertThat(newWritable.get(), is(163));

WritableComparable and comparators

IntWritableWritableComparable

Writablejava.lang.Comparable

package org.apache.hadoop.io;

public interface WritableComparable<T> extends Writable, Comparable<T> {

}

RawComparatorComparator

package org.apache.hadoop.io;

import java.util.Comparator;

public interface RawComparator<T> extends Comparator<T> {

public int compare(byte[] b1, int s1, int l1, byte[] b2, int s2, int l2);

}

IntWritablecompare()

b1b2

s1s2l1l2

WritableComparator RawComparator

WritableComparable

compare()

compare()

Serialization | 95

RawComparatorWritable

IntWritable

RawComparator<IntWritable> comparator = WritableComparator.get(IntWritable.class);

IntWritable

IntWritable w1 = new IntWritable(163);

IntWritable w2 = new IntWritable(67);

assertThat(comparator.compare(w1, w2), greaterThan(0));

byte[] b1 = serialize(w1);

byte[] b2 = serialize(w2);

assertThat(comparator.compare(b1, 0, b1.length, b2, 0, b2.length),

greaterThan(0));

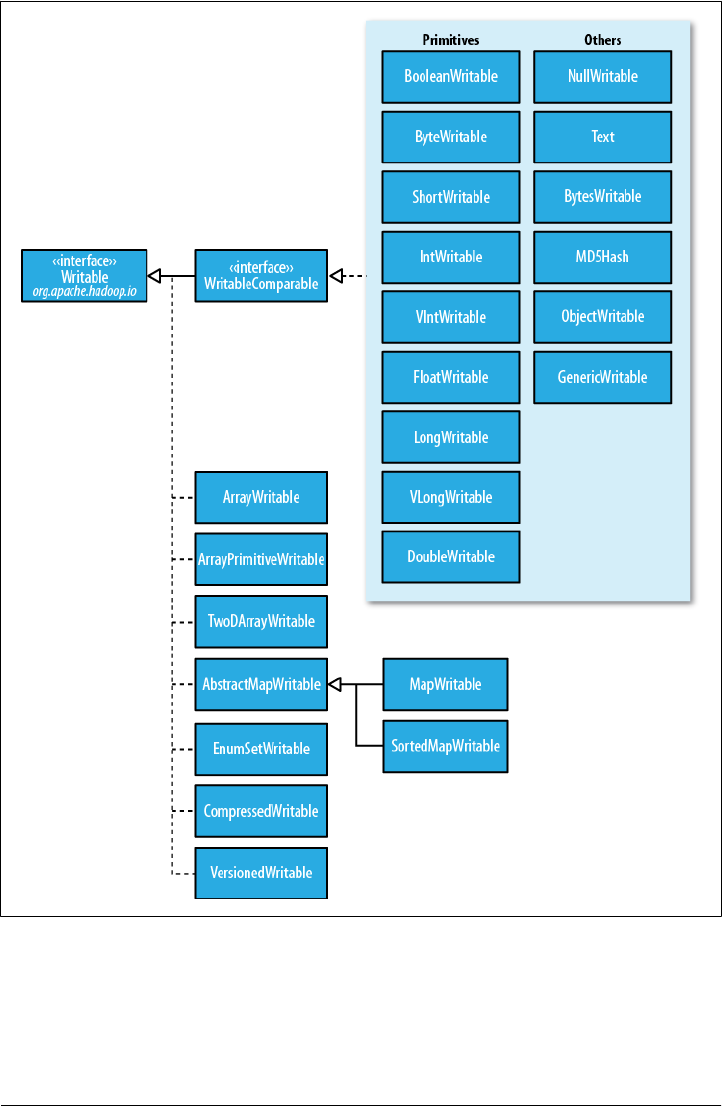

Writable Classes

Writableorg.apache.hadoop.io

Writable wrappers for Java primitives

Writable

charIntWritableget()set()

Java primitive Writable implementation Serialized size (bytes)

boolean BooleanWritable 1

byte ByteWritable 1

short ShortWritable 2

int IntWritable 4

VIntWritable 1–5

float FloatWritable 4

long LongWritable 8

VLongWritable 1–9

double DoubleWritable 8

96 | Chapter 4:Hadoop I/O

IntWritable LongWritable VIntWritable

VLongWritable

Serialization | 97

byte[] data = serialize(new VIntWritable(163));

assertThat(StringUtils.byteToHexString(data), is("8fa3"));

VIntWritableVLongWritable

long

Text

TextWritableWritable

java.lang.StringTextUTF8

Textint

Text

TextStringText

charString

charAt()

Text t = new Text("hadoop");

assertThat(t.getLength(), is(6));

assertThat(t.getBytes().length, is(6));

assertThat(t.charAt(2), is((int) 'd'));

assertThat("Out of bounds", t.charAt(100), is(-1));

charAt() int

StringcharTextfind()

StringindexOf()

Text t = new Text("hadoop");

assertThat("Find a substring", t.find("do"), is(2));

assertThat("Finds first 'o'", t.find("o"), is(3));

assertThat("Finds 'o' from position 4 or later", t.find("o", 4), is(4));

assertThat("No match", t.find("pig"), is(-1));

Indexing.

98 | Chapter 4:Hadoop I/O

TextString

Unicode code point U+0041 U+00DF U+6771 U+10400

Name LATIN CAPITAL

LETTER A

LATIN SMALL LETTER

SHARP S

N/A (a unified

Han ideograph)

DESERET CAPITAL LETTER

LONG I

UTF-8 code units 41 c3 9f e6 9d b1 f0 90 90 80

Java representation \u0041 \u00DF \u6771 \uuD801\uDC00

char char

StringText

public class StringTextComparisonTest {

@Test

public void string() throws UnsupportedEncodingException {

String s = "\u0041\u00DF\u6771\uD801\uDC00";

assertThat(s.length(), is(5));

assertThat(s.getBytes("UTF-8").length, is(10));

assertThat(s.indexOf("\u0041"), is(0));

assertThat(s.indexOf("\u00DF"), is(1));

assertThat(s.indexOf("\u6771"), is(2));

assertThat(s.indexOf("\uD801\uDC00"), is(3));

assertThat(s.charAt(0), is('\u0041'));

assertThat(s.charAt(1), is('\u00DF'));

assertThat(s.charAt(2), is('\u6771'));

assertThat(s.charAt(3), is('\uD801'));

assertThat(s.charAt(4), is('\uDC00'));

assertThat(s.codePointAt(0), is(0x0041));

assertThat(s.codePointAt(1), is(0x00DF));

assertThat(s.codePointAt(2), is(0x6771));

assertThat(s.codePointAt(3), is(0x10400));

}

@Test

public void text() {

Text t = new Text("\u0041\u00DF\u6771\uD801\uDC00");

Unicode.

Serialization | 99

assertThat(t.getLength(), is(10));

assertThat(t.find("\u0041"), is(0));

assertThat(t.find("\u00DF"), is(1));

assertThat(t.find("\u6771"), is(3));

assertThat(t.find("\uD801\uDC00"), is(6));

assertThat(t.charAt(0), is(0x0041));

assertThat(t.charAt(1), is(0x00DF));

assertThat(t.charAt(3), is(0x6771));

assertThat(t.charAt(6), is(0x10400));

}

}

Stringchar

Text

indexOf()String

charfind()Text

charAt()Stringchar

code

PointAt()char

intcharAt()Text

codePointAt()String

Text

Textjava.nio.ByteBuffer

bytesToCodePoint()Text

int

bytesToCodePoint()

public class TextIterator {

public static void main(String[] args) {

Text t = new Text("\u0041\u00DF\u6771\uD801\uDC00");

ByteBuffer buf = ByteBuffer.wrap(t.getBytes(), 0, t.getLength());

int cp;

while (buf.hasRemaining() && (cp = Text.bytesToCodePoint(buf)) != -1) {

System.out.println(Integer.toHexString(cp));

}

}

}

Iteration.

100 | Chapter 4:Hadoop I/O

% hadoop TextIterator

41

df

6771

10400

StringTextWritable

NullWritable

Textset()

Text t = new Text("hadoop");

t.set("pig");

assertThat(t.getLength(), is(3));

assertThat(t.getBytes().length, is(3));

getBytes()

getLength()

Text t = new Text("hadoop");

t.set(new Text("pig"));

assertThat(t.getLength(), is(3));

assertThat("Byte length not shortened", t.getBytes().length,

is(6));

getLength()

getBytes()

Text

java.lang.StringTextString

toString()

assertThat(new Text("hadoop").toString(), is("hadoop"));

BytesWritable

BytesWritable

000000020305

BytesWritable b = new BytesWritable(new byte[] { 3, 5 });

byte[] bytes = serialize(b);

assertThat(StringUtils.byteToHexString(bytes), is("000000020305"));

BytesWritableset()

TextgetBytes()Byte

sWritable

BytesWritable BytesWritable get

Length()

b.setCapacity(11);

assertThat(b.getLength(), is(2));

assertThat(b.getBytes().length, is(11));

Mutability.

Resorting to String.

Serialization | 101

NullWritable

NullWritableWritable

NullWritable

NullWritable

SequenceFile

NullWritable.get()

ObjectWritable and GenericWritable

ObjectWritableString

enumWritablenull

ObjectWritable

SequenceFile

ObjectWritable ObjectWritable

GenericWritable

Writable collections

Writable org.apache.hadoop.io Array

Writable ArrayPrimitiveWritable TwoDArrayWritable MapWritable

SortedMapWritableEnumSetWritable

ArrayWritable TwoDArrayWritable Writable

Writable

ArrayWritableTwoDArrayWritable

ArrayWritable writable = new ArrayWritable(Text.class);

WritableSequenceFile

ArrayWritableTwoDAr

rayWritable

public class TextArrayWritable extends ArrayWritable {

public TextArrayWritable() {

super(Text.class);

}

}

102 | Chapter 4:Hadoop I/O

ArrayWritableTwoDArrayWritableget()set()

toArray()

ArrayPrimitiveWritable

set()

MapWritableSortedMapWritablejava.util.Map<Writable,

Writable>java.util.SortedMap<WritableComparable, Writable>

org.apache.hadoop.io

Writable

MapWritableSortedMapWritable

byte

WritableMapWritableSortedMapWritable

MapWritable

MapWritable src = new MapWritable();

src.put(new IntWritable(1), new Text("cat"));

src.put(new VIntWritable(2), new LongWritable(163));

MapWritable dest = new MapWritable();

WritableUtils.cloneInto(dest, src);

assertThat((Text) dest.get(new IntWritable(1)), is(new Text("cat")));

assertThat((LongWritable) dest.get(new VIntWritable(2)), is(new

LongWritable(163)));

Writable

MapWritableSortedMapWritable

NullWritableEnumSetWritable

WritableArrayWritable

WritableGenericWritable

ArrayWritableListWritable

MapWritable

Implementing a Custom Writable

Writable

Writable

Writable

Writable

Writable

Serialization | 103

Writable

TextPair

import java.io.*;

import org.apache.hadoop.io.*;

public class TextPair implements WritableComparable<TextPair> {

private Text first;

private Text second;

public TextPair() {

set(new Text(), new Text());

}

public TextPair(String first, String second) {

set(new Text(first), new Text(second));

}

public TextPair(Text first, Text second) {

set(first, second);

}

public void set(Text first, Text second) {

this.first = first;

this.second = second;

}

public Text getFirst() {

return first;

}

public Text getSecond() {

return second;

}

@Override

public void write(DataOutput out) throws IOException {

first.write(out);

second.write(out);

}

@Override

public void readFields(DataInput in) throws IOException {

first.readFields(in);

second.readFields(in);

}

@Override

public int hashCode() {

return first.hashCode() * 163 + second.hashCode();

104 | Chapter 4:Hadoop I/O

}

@Override

public boolean equals(Object o) {

if (o instanceof TextPair) {

TextPair tp = (TextPair) o;

return first.equals(tp.first) && second.equals(tp.second);

}

return false;

}

@Override

public String toString() {

return first + "\t" + second;

}

@Override

public int compareTo(TextPair tp) {

int cmp = first.compareTo(tp.first);

if (cmp != 0) {

return cmp;

}

return second.compareTo(tp.second);

}

}

Text

first second

Writable

readFields()

write()readFields()

TextPairwrite()Text

TextreadFields()

Text DataOutput

DataInput

Writable

hashCode() equals() toString() java.lang.Object hash

Code()HashPartitioner

WritableTextOutputFormat

toString()TextOutputFormat

toString()Text

PairText

Serialization | 105

TextPairWritableComparable

compareTo()

TextPairTextArrayWrita

bleText

TextArrayWritableWritableWritableComparable

Implementing a RawComparator for speed

TextPair

TextPair

compareTo()

TextPair

TextPair Text

Text

TextTextRawCompara

tor

TextPair

public static class Comparator extends WritableComparator {

private static final Text.Comparator TEXT_COMPARATOR = new Text.Comparator();

public Comparator() {

super(TextPair.class);

}

@Override

public int compare(byte[] b1, int s1, int l1,

byte[] b2, int s2, int l2) {

try {

int firstL1 = WritableUtils.decodeVIntSize(b1[s1]) + readVInt(b1, s1);

int firstL2 = WritableUtils.decodeVIntSize(b2[s2]) + readVInt(b2, s2);

int cmp = TEXT_COMPARATOR.compare(b1, s1, firstL1, b2, s2, firstL2);

if (cmp != 0) {

return cmp;

}

return TEXT_COMPARATOR.compare(b1, s1 + firstL1, l1 - firstL1,

b2, s2 + firstL2, l2 - firstL2);

} catch (IOException e) {

throw new IllegalArgumentException(e);

}

}

}

106 | Chapter 4:Hadoop I/O

static {

WritableComparator.define(TextPair.class, new Comparator());

}

WritableComparator RawComparator

firstL1 firstL2

Text

decodeVIntSize()WritableUtils

readVInt()

TextPair

Custom comparators

TextPair

Writableorg.apache.hadoop.io

WritableUtils

RawComparator

TextPair

FirstComparator

compare() compare()

public static class FirstComparator extends WritableComparator {

private static final Text.Comparator TEXT_COMPARATOR = new Text.Comparator();

public FirstComparator() {

super(TextPair.class);

}

@Override

public int compare(byte[] b1, int s1, int l1,

byte[] b2, int s2, int l2) {

try {

int firstL1 = WritableUtils.decodeVIntSize(b1[s1]) + readVInt(b1, s1);

int firstL2 = WritableUtils.decodeVIntSize(b2[s2]) + readVInt(b2, s2);

return TEXT_COMPARATOR.compare(b1, s1, firstL1, b2, s2, firstL2);

} catch (IOException e) {

throw new IllegalArgumentException(e);

Serialization | 107

}

}

@Override

public int compare(WritableComparable a, WritableComparable b) {

if (a instanceof TextPair && b instanceof TextPair) {

return ((TextPair) a).first.compareTo(((TextPair) b).first);

}

return super.compare(a, b);

}

}

Serialization Frameworks

Writable

Serialization

org.apache.hadoop.io.serializer WritableSerialization

SerializationWritable

SerializationSerializer

Deserializer

io.serializations

Serializationorg.apache.hadoop.io.seri

alizer.WritableSerialization

Writable

JavaSerialization

Integer

String

Why Not Use Java Object Serialization?

108 | Chapter 4:Hadoop I/O

java.io.Serializable

java.io.Externalizable

Serialization IDL

org.apache.hadoop.record

Serialization | 109

Avro

110 | Chapter 4:Hadoop I/O

Avro Data Types and Schemas

type

{ "type": "null" }

Type Description Schema

null The absence of a value "null"

boolean A binary value "boolean"

int 32-bit signed integer "int"

long 64-bit signed integer "long"

float Single-precision (32-bit) IEEE 754 floating-point number "float"

double Double-precision (64-bit) IEEE 754 floating-point number "double"

Avro | 111

Type Description Schema

bytes Sequence of 8-bit unsigned bytes "bytes"

string Sequence of Unicode characters "string"

Type Description Schema example

array An ordered collection of objects. All objects in a partic-

ular array must have the same schema.

{

"type": "array",

"items": "long"

}

map An unordered collection of key-value pairs. Keys must

be strings and values may be any type, although within

a particular map, all values must have the same schema.

{

"type": "map",

"values": "string"

}

record A collection of named fields of any type. {

"type": "record",

"name": "WeatherRecord",

"doc": "A weather reading.",

"fields": [

{"name": "year", "type": "int"},

{"name": "temperature", "type": "int"},

{"name": "stationId", "type": "string"}

]

}

enum A set of named values. {

"type": "enum",

"name": "Cutlery",

"doc": "An eating utensil.",

"symbols": ["KNIFE", "FORK", "SPOON"]

}

fixed A fixed number of 8-bit unsigned bytes. {

"type": "fixed",

"name": "Md5Hash",

"size": 16

}

union A union of schemas. A union is represented by a JSON

array, where each element in the array is a schema.

Data represented by a union must match one of the

schemas in the union.

[

"null",

"string",

{"type": "map", "values": "string"}

]

double

doublefloatFloat

112 | Chapter 4:Hadoop I/O

recordenumfixed

namenamespace

stringStringUtf8

Utf8

Utf8

String

Utf8

Utf8java.lang.CharSequence

Utf8 String

toString()

String

avro.java.stringString

{ "type": "string", "avro.java.string": "String" }

String

stringType

String

String

Avro type Generic Java mapping Specific Java mapping Reflect Java mapping

null null type

Avro | 113

Avro type Generic Java mapping Specific Java mapping Reflect Java mapping

boolean boolean

int int short or int

long long

float float

double double

bytes java.nio.ByteBuffer Array of byte

string org.apache.avro.

util.Utf8

or java.lang.String

java.lang.String

array org.apache.avro.

generic.GenericArray

Array or java.util.Collection

map java.util.Map

record org.apache.avro.

generic.Generic

Record

Generated class implementing

org.apache.avro.

specific.Specific

Record.

Arbitrary user class with a zero-

argument constructor. All inherited

nontransient instance fields are used.

enum java.lang.String Generated Java enum. Arbitrary Java enum.

fixed org.apache.avro.

generic.GenericFixed

Generated class implementing

org.apache.avro.

specific.SpecificFixed.

org.apache.avro.

generic.GenericFixed

union java.lang.Object

In-Memory Serialization and Deserialization

{

"type": "record",

"name": "StringPair",

"doc": "A pair of strings.",

"fields": [

{"name": "left", "type": "string"},

{"name": "right", "type": "string"}

]

}

114 | Chapter 4:Hadoop I/O

.avsc

Schema.Parser parser = new Schema.Parser();

Schema schema = parser.parse(getClass().getResourceAsStream("StringPair.avsc"));

GenericRecord datum = new GenericData.Record(schema);

datum.put("left", "L");

datum.put("right", "R");

ByteArrayOutputStream out = new ByteArrayOutputStream();

DatumWriter<GenericRecord> writer = new GenericDatumWriter<GenericRecord>(schema);

Encoder encoder = EncoderFactory.get().binaryEncoder(out, null);

writer.write(datum, encoder);

encoder.flush();

out.close();

DatumWriter Encoder

DatumWriterEncoder

GenericDatumWriter

GenericRecordEncodernull

write()

GenericDatumWriter

write()

DatumReader<GenericRecord> reader = new GenericDatumReader<GenericRecord>(schema);

Decoder decoder = DecoderFactory.get().binaryDecoder(out.toByteArray(), null);

GenericRecord result = reader.read(null, decoder);

assertThat(result.get("left").toString(), is("L"));

assertThat(result.get("right").toString(), is("R"));

nullbinaryDecoder()read()

result.get("left")result.get("left")Utf8

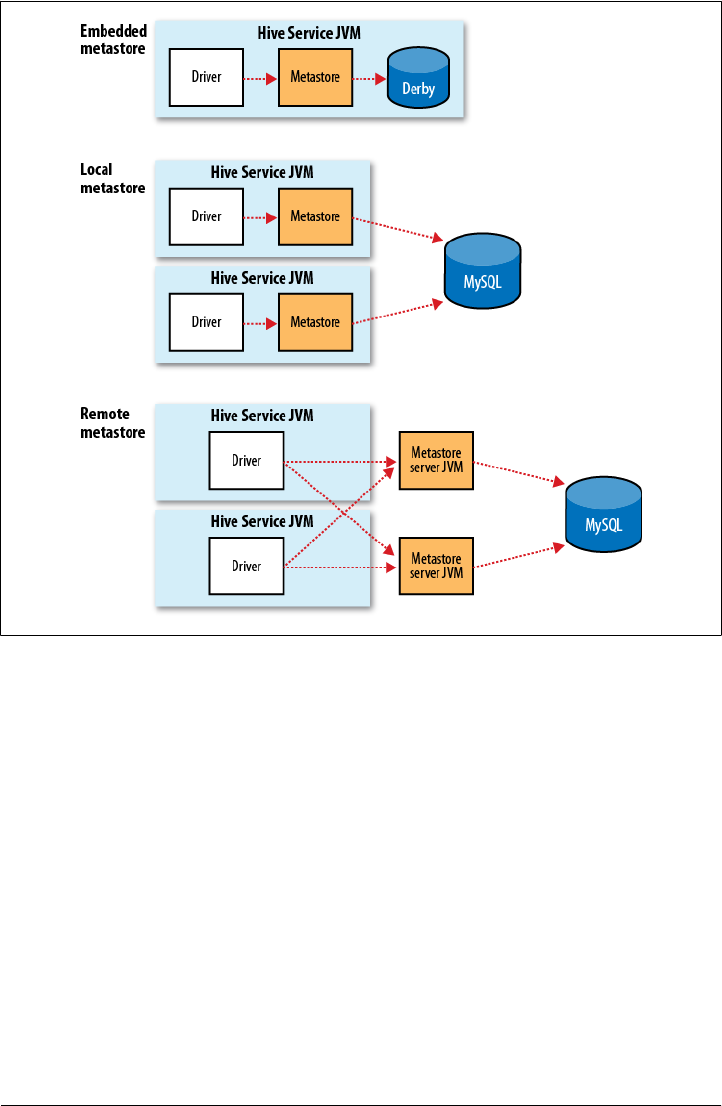

StringtoString()