SG242580 IP Network Design Guide

IP%20Network%20Design%20Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 324 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents

- Preface

- Chapter 1. Introduction

- Chapter 2. The Network Infrastructure

- Chapter 3. Address, Name and Network Management

- 3.1 Address Management

- 3.2 Address Assignment

- 3.3 Name Management

- 3.3.1 Static Files

- 3.3.2 The Domain Name System (DNS)

- 3.3.3 Dynamic Domain Name System (DDNS)

- 3.3.4 DNS Security

- 3.3.5 Does The Network Need DNS?

- 3.3.6 Domain Administration

- 3.3.7 A Few Words on Creating Subdomains

- 3.3.8 A Note on Naming Infrastructure

- 3.3.9 Registering An Organization’s Domain Name

- 3.3.10 Dynamic DNS Names (DDNS)

- 3.3.11 Microsoft Windows Considerations

- 3.3.12 Final Word On DNS

- 3.4 Network Management

- Chapter 4. IP Routing and Design

- Chapter 5. Remote Access

- 5.1 Remote Access Environments

- 5.2 Remote Access Technologies

- 5.2.1 Remote Control Approach

- 5.2.2 Remote Client Approach

- 5.2.3 Remote Node Approach

- 5.2.4 Remote Dial Access

- 5.2.5 Dial Scenario Design

- 5.2.6 Remote Access Authentication Protocols

- 5.2.7 Point-to-Point Tunneling Protocol (PPTP)

- 5.2.8 Layer 2 Forwarding (L2F)

- 5.2.9 Layer 2 Tunneling Protocol (L2TP)

- 5.2.10 VPN Remote User Access

- Chapter 6. IP Security

- Chapter 7. Multicasting and Quality of Service

- Chapter 8. Internetwork Design Study

- Appendix A. Voice over IP

- Appendix B. IBM TCP/IP Products Functional Overview

- Appendix C. Special Notices

- Appendix D. Related Publications

- How to Get ITSO Redbooks

- List of Abbreviations

- Index

- ITSO Redbook Evaluation

IPNetworkDesignGuide

Martin W. Murhammer, Kok-Keong Lee, Payam Motallebi,

Paolo Borghi, Karl Wozabal

International Technical Support Organization

SG24-2580-01

http://www.redbooks.ibm.com

International Technical Support Organization SG24-2580-01

IP Network Design Guide

June 1999

© Copyright International Business Machines Corporation 1995 1999. All rights reserved.

Note to U.S Government Users - Documentation related to restricted rights - Use, duplication or disclosure is subject to restrictions

set forth in GSA ADP Schedule Contract with IBM Corp.

Second Edition (June 1999)

This edition applies to Transmission Control Protocol/Internet Protocol (TCP/IP) in general and selected IBM and

OEM implementations thereof.

Comments may be addressed to:

IBM Corporation, International Technical Support Organization

Dept. HZ8 Building 678

P.O. Box 12195

Research Triangle Park, NC 27709-2195

When you send information to IBM, you grant IBM a non-exclusive right to use or distribute the information in any way

it believes appropriate without incurring any obligation to you.

Before using this information and the product it supports, be sure to read the general information in Appendix C,

“Special Notices” on page 287.

Take Note!

© Copyright IBM Corp. 1995 1999 iii

Contents

Preface ......................................................ix

How This Book Is Organized . .........................................ix

The Team That Wrote This Redbook . ...................................x

CommentsWelcome ................................................xi

Chapter 1. Introduction..........................................1

1.1 The Internet Model . . . ........................................1

1.1.1 A Brief History of the Internet and IP Technologies . . . ............1

1.1.2 The Open Systems Interconnection (OSI) Model.................2

1.1.3 The TCP/IP Model........................................4

1.1.4 TheNeedforDesigninIPNetworks..........................5

1.1.5 DesigninganIPNetwork...................................6

1.2 ApplicationConsiderations....................................11

1.2.1 Bandwidth Requirements .................................11

1.2.2 Performance Requirements................................12

1.2.3 Protocols Required ......................................12

1.2.4 QualityofService/TypeofService(QoS/ToS)..................12

1.2.5 SensitivitytoPacketLossandDelay.........................13

1.2.6 Multicast..............................................13

1.2.7 Proxy-Enabled . . .......................................13

1.2.8 Directory Needs . .......................................13

1.2.9 DistributedApplications...................................14

1.2.10 Scalability ............................................14

1.2.11 Security..............................................14

1.3 PlatformConsiderations......................................14

1.4 InfrastructureConsiderations ..................................16

1.5 ThePerfectNetwork.........................................17

Chapter 2. The Network Infrastructure ............................19

2.1 Technology ................................................20

2.1.1 TheBasics ............................................20

2.1.2 LAN Technologies .......................................22

2.1.3 WAN Technologies ......................................31

2.1.4 Asynchronous Transfer Mode (ATM). . . ......................47

2.1.5 FastInternetAccess.....................................51

2.1.6 WirelessIP............................................55

2.2 The Connecting Devices ......................................57

2.2.1 Hub..................................................57

2.2.2 Bridge................................................58

2.2.3 Router................................................60

2.2.4 Switch................................................62

2.3 ATM Versus Switched High-Speed LAN ..........................67

2.4 FactorsThatAffectaNetworkDesign............................68

2.4.1 SizeMatters...........................................68

2.4.2 Geographies ...........................................68

2.4.3 Politics ...............................................68

2.4.4 TypesofApplication.....................................68

2.4.5 NeedForFaultTolerance.................................69

2.4.6 ToSwitchorNottoSwitch ................................69

2.4.7 Strategy ..............................................69

2.4.8 CostConstraints........................................69

iv IP Network Design Guide

2.4.9 Standards . . . .........................................69

Chapter 3. Address, Name and Network Management ............... 71

3.1 Address Management . . . ....................................71

3.1.1 IPAddressesandAddressClasses.........................71

3.1.2 SpecialCaseAddresses .................................73

3.1.3 Subnets ..............................................74

3.1.4 IPAddressRegistration.................................. 79

3.1.5 IP Address Exhaustion...................................80

3.1.6 ClasslessInter-DomainRouting(CIDR)......................81

3.1.7 The Next Generation of the Internet Address IPv6, IPng . ........ 83

3.1.8 Address Management Design Considerations . . . ..............83

3.2 AddressAssignment........................................ 86

3.2.1 Static................................................86

3.2.2 ReverseAddressResolutionProtocol(RARP).................86

3.2.3 BootstrapProtocol(BootP) ............................... 86

3.2.4 Dynamic Host Configuration Protocol (DHCP) . . . ..............87

3.3 Name Management .........................................89

3.3.1 StaticFiles............................................ 89

3.3.2 TheDomainNameSystem(DNS)..........................90

3.3.3 Dynamic Domain Name System (DDNS) . . .................. 104

3.3.4 DNSSecurity......................................... 104

3.3.5 DoesTheNetworkNeedDNS?........................... 106

3.3.6 DomainAdministration.................................. 107

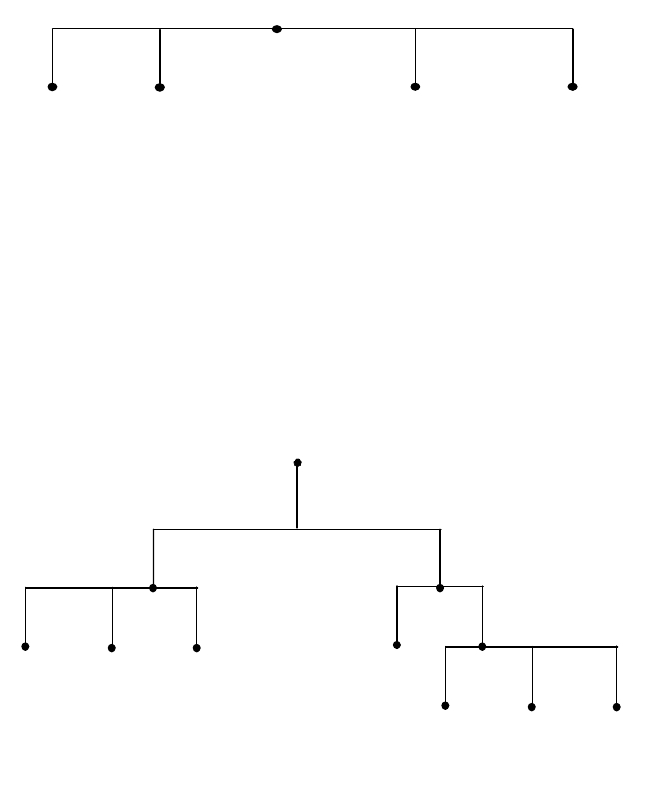

3.3.7 A Few Words on Creating Subdomains . . . .................. 112

3.3.8 ANoteonNamingInfrastructure..........................113

3.3.9 RegisteringAnOrganization’sDomainName ................ 113

3.3.10 DynamicDNSNames(DDNS)........................... 114

3.3.11 Microsoft Windows Considerations ....................... 115

3.3.12 FinalWordOnDNS................................... 118

3.4 Network Management . . . ................................... 118

3.4.1 TheVariousDisciplines................................. 119

3.4.2 The Mechanics of Network Management . . .................. 119

3.4.3 The Effects of Network Management on Networks.............123

3.4.4 The Management Strategy. . ............................. 124

Chapter 4. IP Routing and Design .............................. 127

4.1 TheNeedforRouting ...................................... 127

4.2 TheBasics .............................................. 128

4.3 TheRoutingProtocols...................................... 130

4.3.1 StaticRoutingversusDynamicRouting..................... 131

4.3.2 RoutingInformationProtocol(RIP) ........................ 135

4.3.3 RIPVersion2 ........................................ 137

4.3.4 OpenShortestPathFirst(OSPF).......................... 138

4.3.5 BorderGatewayProtocol-4(BGP-4) ....................... 141

4.4 Choosing a Routing Protocol ................................. 142

4.5 BypassingRouters ........................................ 144

4.5.1 RouterAccelerator..................................... 144

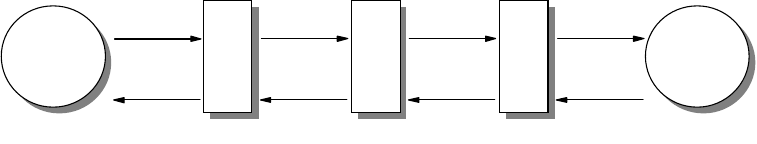

4.5.2 Next Hop Resolution Protocol (NHRP)...................... 145

4.5.3 RouteSwitching....................................... 148

4.5.4 MultiprotocoloverATM(MPOA) ..........................149

4.5.5 VLAN IP Cut-Through .................................. 150

4.6 Important Notes about IP Design . ............................. 151

v

4.6.1 Physical versus Logical Network Design .....................152

4.6.2 FlatversusHierarchicalDesign............................152

4.6.3 CentralizedRoutingversusDistributedRouting................152

4.6.4 Redundancy ..........................................153

4.6.5 FrameSize...........................................154

4.6.6 Filtering..............................................155

4.6.7 Multicast Support ......................................155

4.6.8 Policy-BasedRouting ...................................155

4.6.9 Performance..........................................155

Chapter 5. Remote Access .....................................159

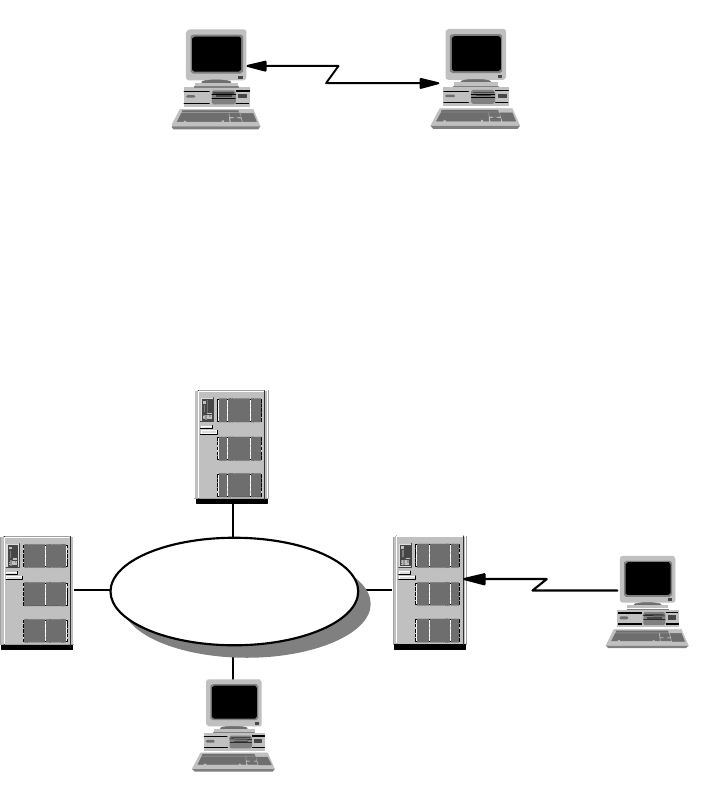

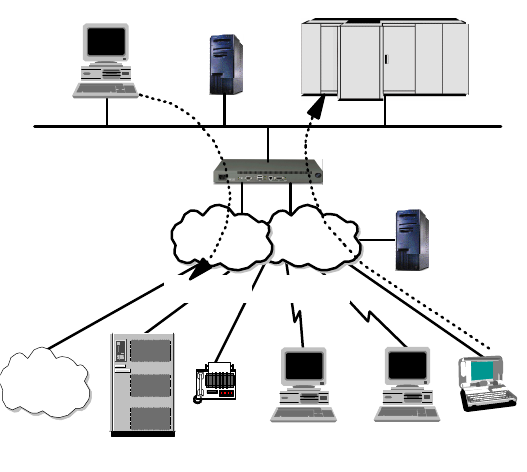

5.1 RemoteAccessEnvironments ................................159

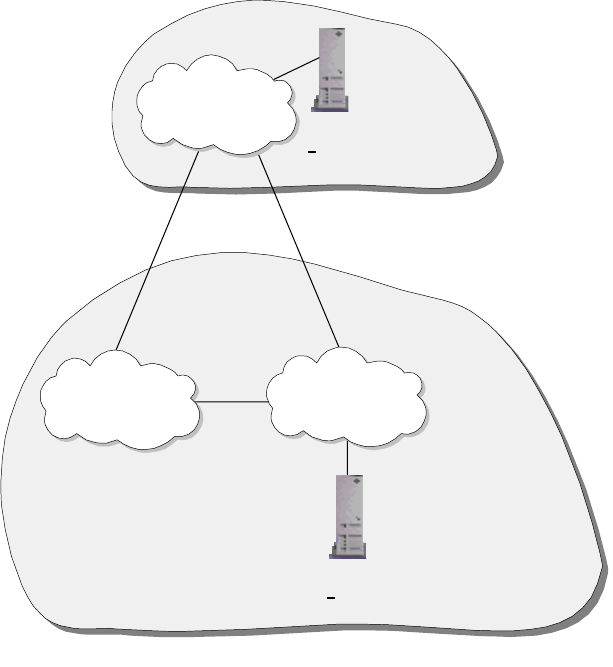

5.1.1 Remote-to-Remote.....................................159

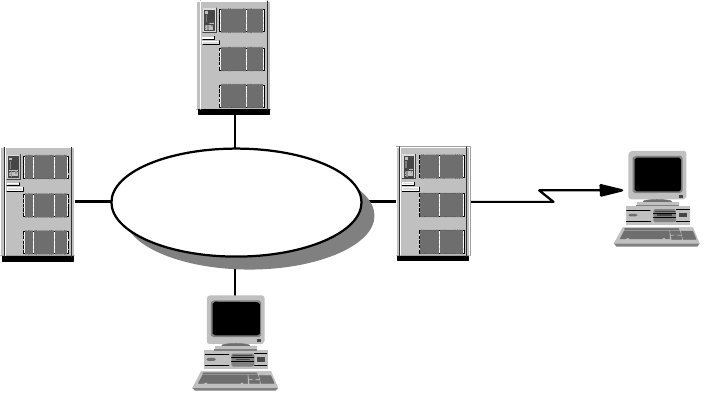

5.1.2 Remote-to-LAN........................................160

5.1.3 LAN-to-Remote........................................160

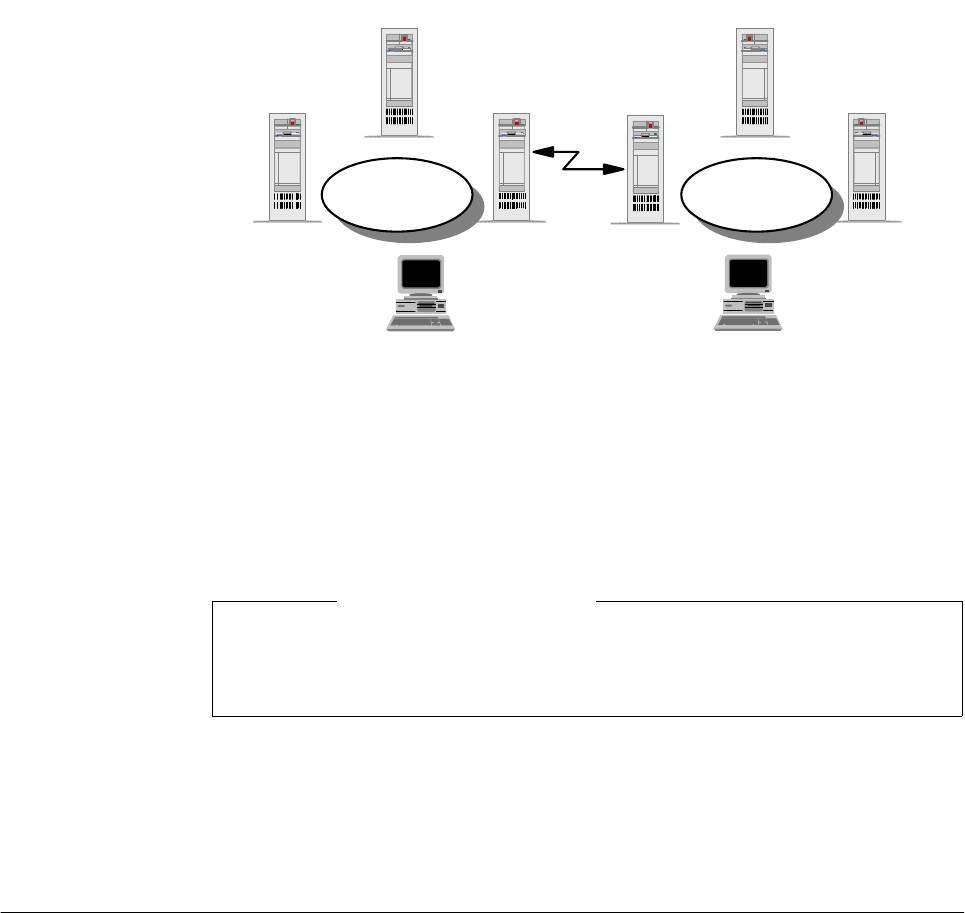

5.1.4 LAN-to-LAN...........................................161

5.2 Remote Access Technologies . ................................162

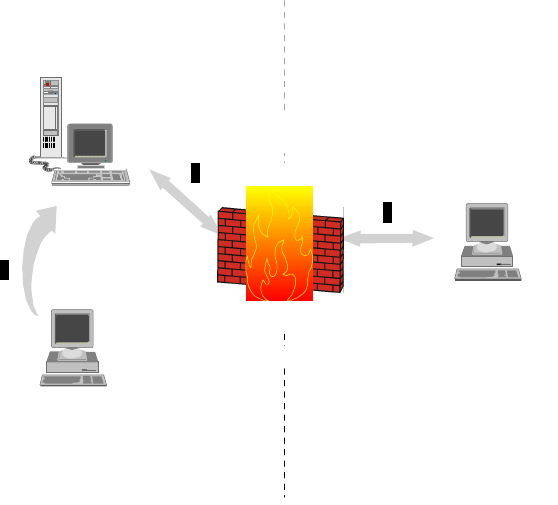

5.2.1 RemoteControlApproach................................163

5.2.2 RemoteClientApproach.................................163

5.2.3 RemoteNodeApproach .................................164

5.2.4 RemoteDialAccess....................................164

5.2.5 Dial Scenario Design....................................166

5.2.6 Remote Access Authentication Protocols ....................168

5.2.7 Point-to-Point Tunneling Protocol (PPTP) ....................170

5.2.8 Layer2Forwarding(L2F)................................171

5.2.9 Layer 2 Tunneling Protocol (L2TP) .........................172

5.2.10 VPNRemoteUserAccess...............................180

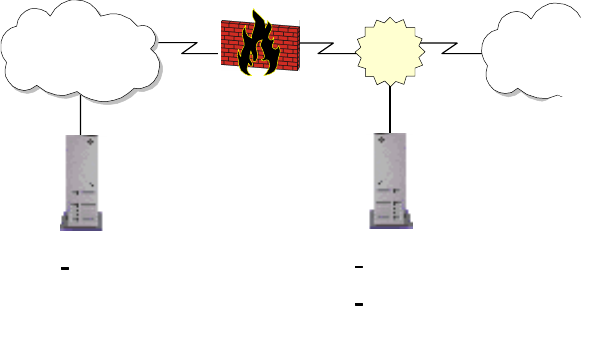

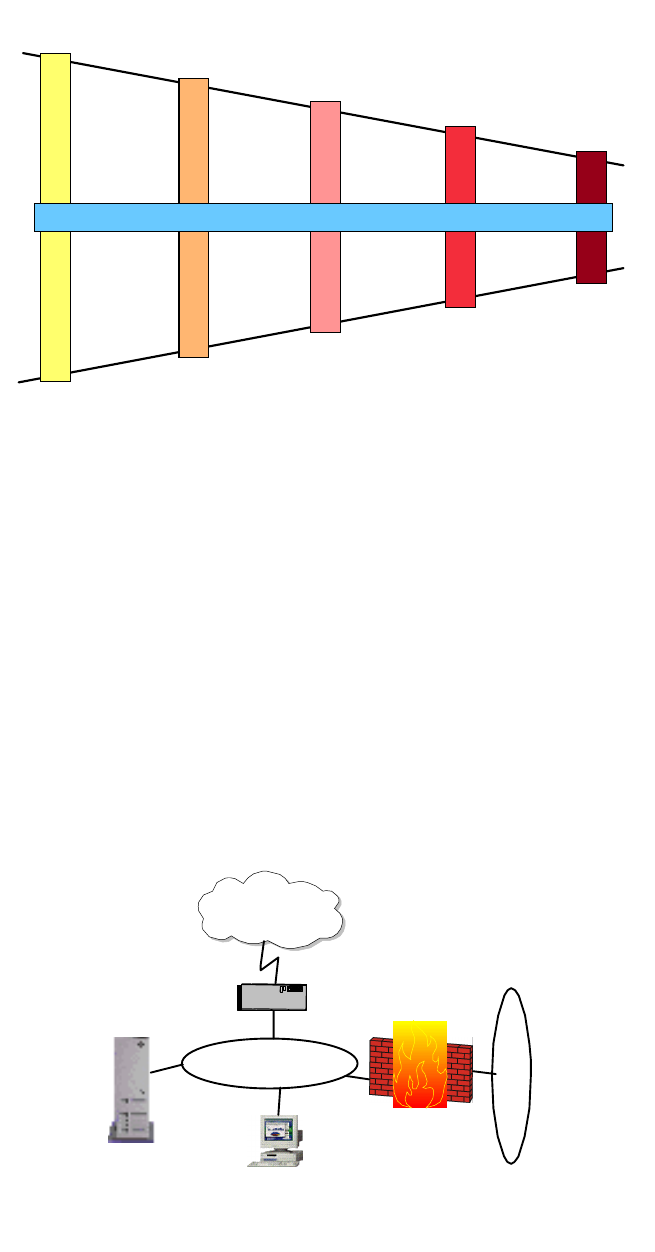

Chapter 6. IP Security.........................................187

6.1 SecurityIssues............................................187

6.1.1 CommonAttacks.......................................187

6.1.2 ObservingtheBasics ...................................187

6.2 SolutionstoSecurityIssues ..................................188

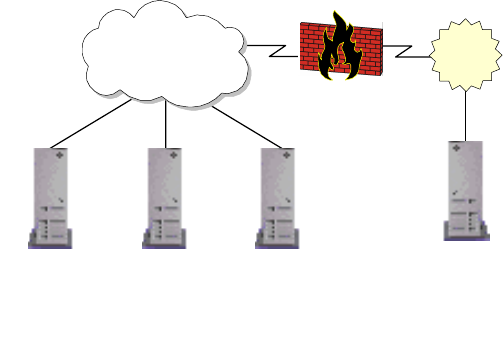

6.2.1 Implementations.......................................191

6.3 TheNeedforaSecurityPolicy................................192

6.3.1 NetworkSecurityPolicy..................................193

6.4 IncorporatingSecurityintoYourNetworkDesign ..................194

6.4.1 Expecting the Worst, Planning for the Worst . . ................194

6.4.2 Which Technology To Apply, and Where? ....................195

6.5 Security Technologies. ......................................197

6.5.1 SecuringtheNetwork ...................................197

6.5.2 SecuringtheTransactions................................210

6.5.3 SecuringtheData......................................215

6.5.4 SecuringtheServers....................................218

6.5.5 HotTopicsinIPSecurity.................................218

Chapter 7. Multicasting and Quality of Service.....................227

7.1 TheRoadtoMulticasting ....................................227

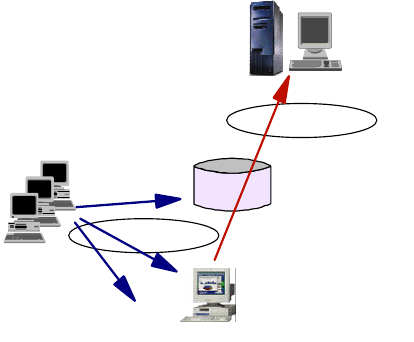

7.1.1 BasicsofMulticasting...................................229

7.1.2 TypesofMulticastingApplications..........................229

7.2 Multicasting...............................................229

7.2.1 Multicast Backbone on the Internet (MBONE) . ................230

7.2.2 IPMulticastTransport...................................231

7.2.3 MulticastRouting ......................................234

vi IP Network Design Guide

7.2.4 MulticastAddressResolutionServer(MARS) ................ 238

7.3 DesigningaMulticastingNetwork ............................. 239

7.4 QualityofService ......................................... 241

7.4.1 TransportforNewApplications ........................... 241

7.4.2 QualityofServiceforIPNetworks......................... 243

7.4.3 ResourceReservationProtocol(RSVP)..................... 243

7.4.4 Multiprotocol Label Switching (MPLS) ...................... 244

7.4.5 DifferentiatedServices.................................. 245

7.5 Congestion Control ........................................ 245

7.5.1 First-In-First-Out(FIFO)................................. 246

7.5.2 Priority Queuing....................................... 246

7.5.3 Weighted Fair Queuing (WFQ)............................ 246

7.6 ImplementingQoS......................................... 247

Chapter 8. Internetwork Design Study ........................... 249

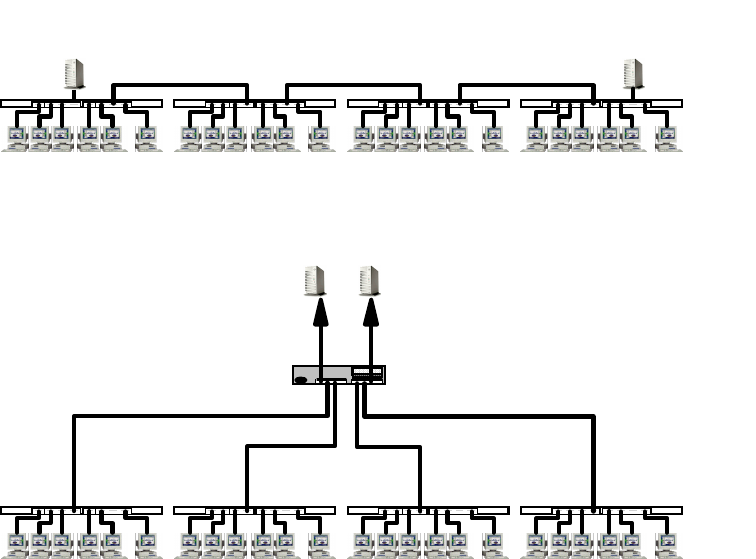

8.1 SmallSizedNetwork(<80Users) ............................. 249

8.1.1 Connectivity Design . ................................... 250

8.1.2 Logical Network Design ................................. 252

8.1.3 Network Management .................................. 253

8.1.4 Addressing........................................... 254

8.1.5 Naming ............................................. 255

8.1.6 Connecting the Network to the Internet . . . .................. 255

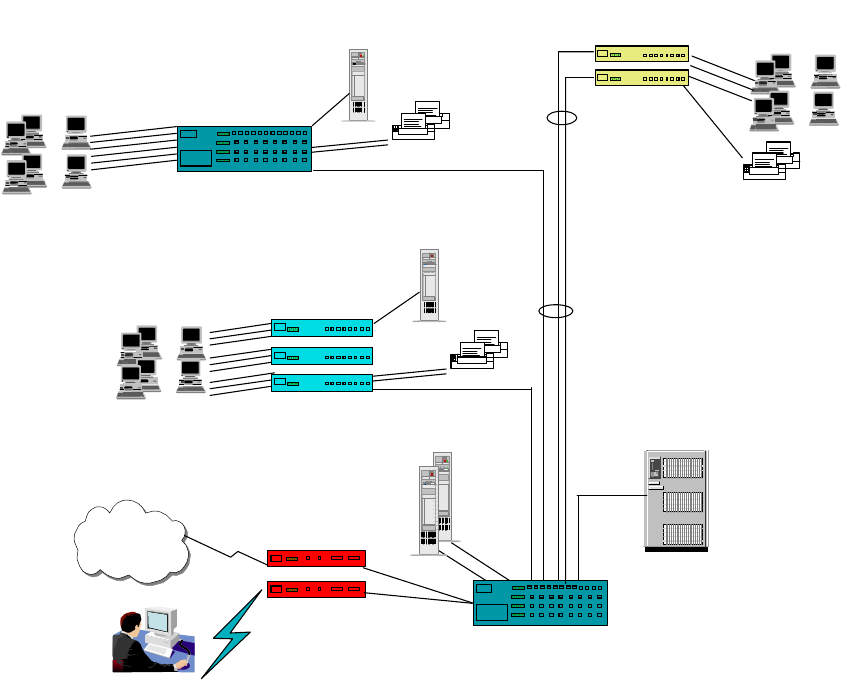

8.2 MediumSizeNetwork(<500Users)............................ 256

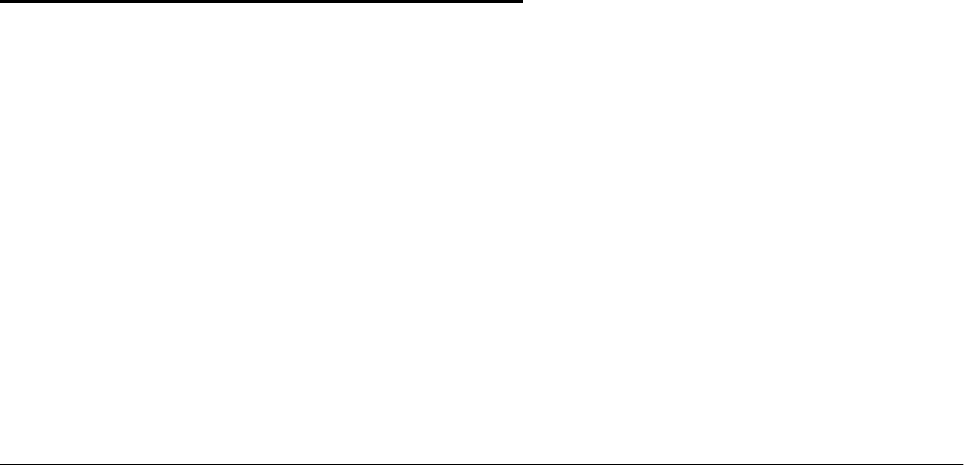

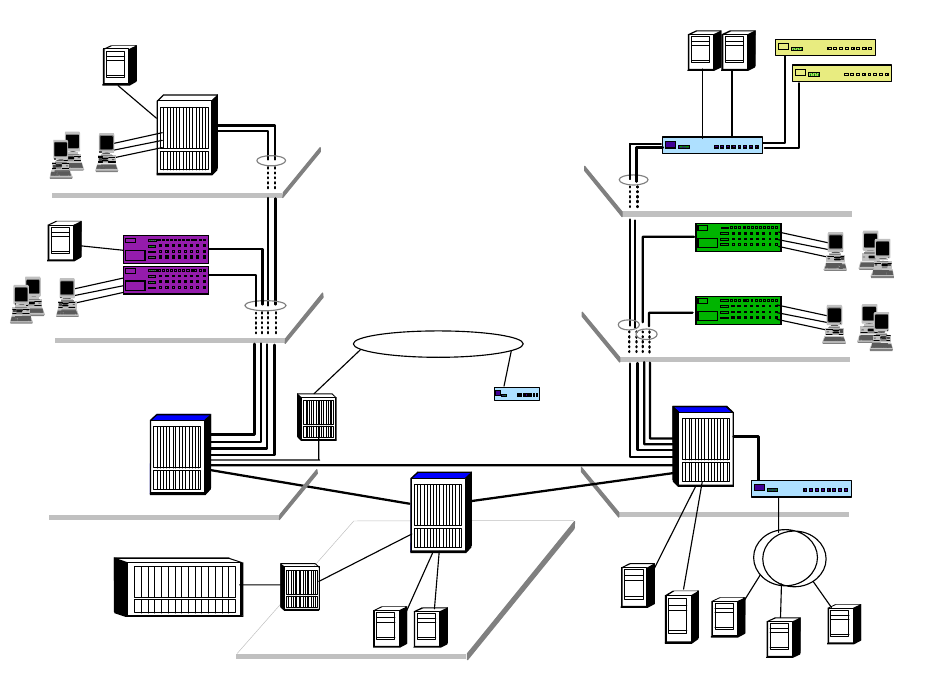

8.2.1 Connectivity Design . ................................... 258

8.2.2 Logical Network Design ................................. 259

8.2.3 Addressing........................................... 261

8.2.4 Naming ............................................. 262

8.2.5 RemoteAccess....................................... 263

8.2.6 Connecting the Network to the Internet . . . .................. 264

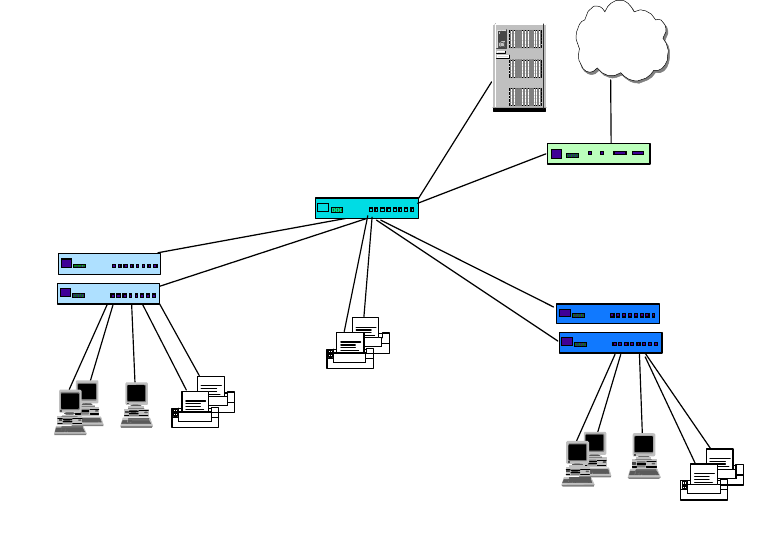

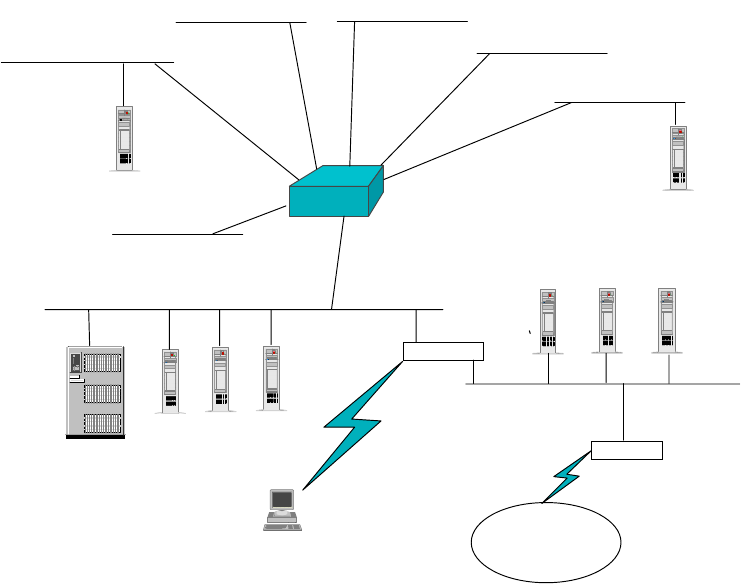

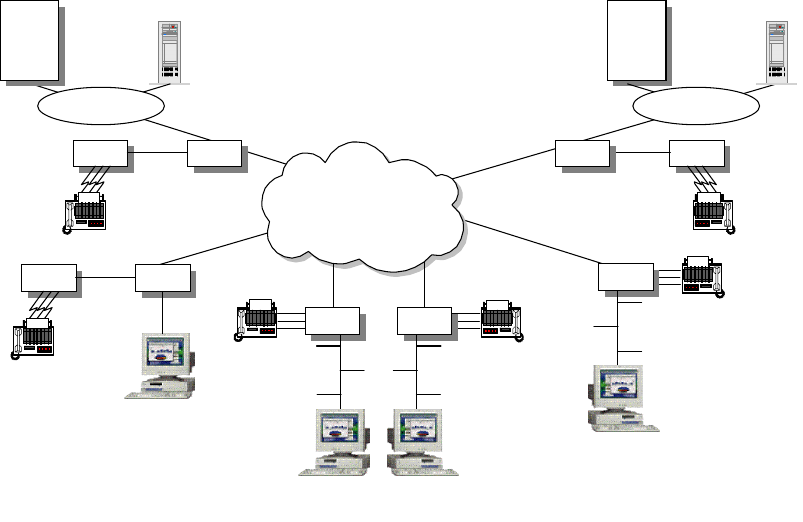

8.3 LargeSizeNetwork(>500Users)............................. 265

Appendix A. Voice over IP ........................................271

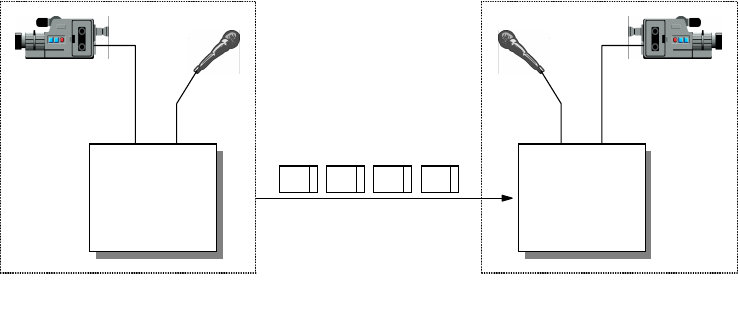

A.1 The Need for Standardization ....................................271

A.1.1 The H.323 ITU-T Recommendations . . . ........................271

A.2 TheVoiceoverIPProtocolStack .................................273

A.3 VoiceTerminologyandParameters................................273

A.4 VoiceoverIPDesignandImplementations..........................275

A.4.1 TheVoiceoverIPDesignApproach...........................277

Appendix B. IBM TCP/IP Products Functional Overview ..............279

B.1 SoftwareOperatingSystemImplementations........................279

B.2 IBMHardwarePlatformImplementations ...........................284

Appendix C. Special Notices ......................................287

Appendix D. Related Publications .................................289

D.1 International Technical Support Organization Publications . . . ...........289

D.2 Redbooks on CD-ROMs . . . .....................................289

D.3 OtherResources..............................................289

How to Get ITSO Redbooks .................................... 291

IBM Redbook Order Form ...........................................292

viii IP Network Design Guide

© Copyright IBM Corp. 1995 1999 ix

Preface

This redbook identifies some of the basic design aspects of IP networks and

explains how to deal with them when implementing new IP networks or

redesigning existing IP networks. This project focuses on internetwork and

transport layer issues such as address and name management, routing, network

management, security, load balancing and performance, design impacts of the

underlying networking hardware, remote access, quality of service, and

platform-specific issues. Application design aspects, such as e-mail, gateways,

Web integration, etc., are discussed briefly where they influence the design of an

IP network.

After a general discussion of the aforementioned design areas, this redbook

provides three examples for IP network design, depicting a small, medium and

large network. You are taken through the steps of the design and the reasoning

as to why things are shown one way instead of another. Of course, every network

is different and therefore these examples are not intended to generalize. Their

main purpose is to illustrate a systematic approach to an IP network design given

a specific set of requirements, expectations, technologies and budgets.

This redbook will help you design, create or change IP networks implementing

the basic logical infrastructures required for a successful operation of such

networks. This book does not describe how to deploy corporate applications such

as e-mail, e-commerce, Web server or distributed databases, just to name a few.

How This Book Is Organized

Chapter 1 contains an introduction to TCP/IP and to important considerations of

network design in general. It explains the importance of applications and

business models that ultimately dictate the way a design approach will take,

which is important for you to understand before you begin the actual network

design.

Chapter 2 contains an overview of network hardware, infrastructure and standard

protocols on top of which IP networks can be built. It describes the benefits and

peculiarities of those architectures and points out specific issues that are

important when IP networks are to be built on top of a particular network.

Chapter 3 contains information on structuring IP networks in regard to addresses,

domains and names. It explains how to derive the most practical

implementations, and it describes the influence that each of those can have on

the network design.

Chapter 4 explains routing, a cornerstone in any IP network design. This chapter

closes the gap between the network infrastructure and the logical structure of the

IP network that runs on top of it. If you master the topics and suggestions in this

chapter, you will have made the biggest step toward a successful design.

Chapter 5 contains information on remote access, one of the fastest growing

areas in IP networks today. This information will help you identify the issues that

are inherent to various approaches of remote access and it will help you find the

right solution to the design of such network elements.

xIP Network Design Guide

Chapter 6 contains information on IP security. It illustrates how different security

architectures protect different levels of the TCP/IP stack, from the application to

the physical layer, and what the influences of some of the more popular security

architectures are on the design of IP networks.

Chapter 7 gives you a thorough tune-up on IP multicasting and IP quality of

service (QoS), describing the pros and cons and the best design approaches to

networks that have to include these features.

Chapter 8 contains descriptions of sample network designs for small, medium

and large companies that implement an IP network in their environment. These

examples are meant to illustrate a systematic design approach but are slightly

influenced by real-world scenarios.

Appendix A provides an overview of the Voice over IP technology and design

considerations for implementing it.

Appendix B provides a cross-platform TCP/IP functional comparison for IBM

hardware and software and Microsoft Windows platforms.

The Team That Wrote This Redbook

This redbook was produced by a team of specialists from around the world

working at the International Technical Support Organization, Raleigh Center. The

leader of this project was Martin W. Murhammer.

Martin W. Murhammer is a Senior I/T Availability Professional at the ITSO

Raleigh Center. Before joining the ITSO in 1996, he was a Systems Engineer in

the Systems Service Center at IBM Austria. He has 13 years of experience in the

personal computing environment including areas such as heterogeneous

connectivity, server design, system recovery, and Internet solutions. He is an IBM

Certified OS/2 and LAN Server Engineer and a Microsoft Certified Professional

for Windows NT. Martin has co-authored a number of redbooks during

residencies at the ITSO Raleigh and Austin Centers. His latest publications are

TCP/IP Tutorial and Technical Overview,

GG24-3376, and

A Comprehensive

Guide to Virtual Private Networks Volume 1: IBM Firewall, Server and Client

Solutions,

SG24-5201.

Kok-Keong Lee is an Advisory Networking Specialist with IBM Singapore. He

has 10 years of experience in the networking field. He holds a degree in

Computer and Information Sciences from the National University of Singapore.

His areas of expertise include ATM, LAN switches and Fast Internet design for

cable/ADSL networks.

Payam Motallebi is an IT Specialist with IBM Australia. He has three years of

experience in the IT field. He holds a degree in Computer Engineering from

Wollongong University where he is currently undertaking a Master of Computer

Engineering in Digital Signal Processing. He has worked at IBM for one year. His

areas of expertise include UNIX, specifically AIX, and TCP/IP services.

Paolo Borghi is a System Engineer in the IBM Global Services Network Services

at IBM Italia S.p.A. He has three years of experience in the TCP/IP and

Multiprotocol internetworking area in the technical support for Network

xi

Outsourcing and in network design for cross industries solutions. He holds a

degree in High Energy Particle Physics from Universita degli Studi di Milano.

Karl Wozabal is a Senior Networking Specialist at the ITSO Raleigh Center. He

writes extensively and teaches IBM classes worldwide on all areas of TCP/IP.

Before joining the ITSO, Karl worked at IBM Austria as a Networking Support

Specialist.

Thanks to the following people for their invaluable contributions to this project:

Jonathan Follows, Shawn Walsh, Linda Robinson

International Technical Support Organization, Raleigh Center

Thanks to the authors of the first edition of this redbook:

Alfred B. Christensen, Peter Hutchinson, Andrea Paravan, Pete Smith

Comments Welcome

Your comments are important to us!

We want our redbooks to be as helpful as possible. Please send us your

comments about this or other redbooks in one of the following ways:

• Fax the evaluation form found in “ITSO Redbook Evaluation” on page 309 to

the fax number shown on the form.

• Use the online evaluation form found at http://www.redbooks.ibm.com

• Send your comments in an Internet note to redbook@us.ibm.com

xii IP Network Design Guide

© Copyright IBM Corp. 1995 1999 1

Chapter 1. Introduction

We have seen dramatic changes in the business climate in the 1990s, especially

with the growth of e-business on the Internet. More business is conducted

electronically and deals are closed in lightning speed. These changes have

affected how a company operates in this electronic age and computer systems

have taken a very important role in a company’s profile. The Internet has

introduced a new turf for companies to compete and more companies are going

global at the same time to grow revenues. Connectivity has never been as

important as it is today.

The growth of the Internet has reached a stage where a company has to get

connected to it in order to stay relevant and compete. The traditional text-based

transaction systems have been replaced by Web-based applications with

multimedia contents. The technologies that are related to the Internet have

become mandatory subjects not only for MIS personnel, but even the CEO. And

TCP/IP has become a buzzword overnight.

• What is TCP/IP?

• How does one build a TCP/IP network?

• What are the technologies involved?

• How does one get connected to the Internet, if the need arises?

• Are there any guidelines?

While this book does not and cannot teach you how to run your business, it briefly

describes the various TCP/IP components and provides a comprehensive

approach in building a TCP/IP network.

1.1 The Internet Model

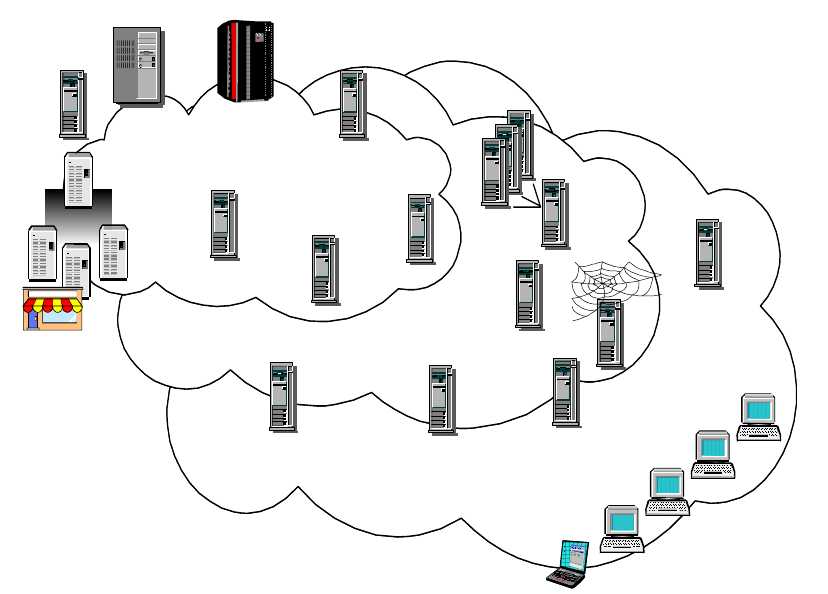

It has been estimated that there are currently 40,000,000 hosts connected to the

Internet. The rapid rise in popularity of the Internet is mainly due to the World

Wide Web (WWW) and e-mail systems that enable free exchanges of information.

A cursory glance at the history of the Internet and its growth enables you to

understand the reason for its popularity and perhaps, predict some trend towards

how future networks should be built.

1.1.1 A Brief History of the Internet and IP Technologies

In the 1960s and 1970s, many different networks were running their own

protocols and implementations. Sharing of information among these networks

soon became a problem and there was a need for a common protocol to be

developed. The Defense Advanced Research Projects Agency (DARPA) funded

the exploration of this common protocol and the ARPANET protocol suite, which

introduced the fundamental concept of layering. The TCP/IP protocol suite then

evolved from the ARPANET protocol suite and took its shape in 1978. With the

use of TCP/IP, a network was created that was mainly used by government

agencies and research institutes for the purpose of information sharing and

research collaboration.

In the early 1980s TCP/IP became the backbone protocol in multivendor networks

such as ARPANET, NFSNET and regional networks. The protocol suite was

2IP Network Design Guide

integrated into the University of California at Berkeley′ s UNIX operating system

and became available to the public for a nominal fee. From this point on TCP/IP

became widely used due to its inexpensive availability in UNIX and its spread to

other operating systems.

Today, TCP/IP provides the ability for corporations to merge differing physical

networks while giving users a common suite of functions. It allows interoperability

between equipment supplied by multiple vendors on multiple platforms, and it

provides access to the Internet.

The Internet of today consists of large international, national and regional

backbone networks, which allow local and campus networks and individuals

access to global resources. Use of the Internet has grown exponentially over the

last three years, especially with the consumer market adopting it.

So why has the use of TCP/IP grown at such a rate?

The reasons include the availability of common application functions across

differing platforms and the ability to access the Internet, but the primary reason is

that of interoperability. The open standards of TCP/IP allow corporations to

interconnect or merge different platforms. An example is the simple case of

allowing file transfer capability between an IBM MVS/ESA host and, perhaps, an

Apple Macintosh workstation.

TCP/IP also provides transport for other protocols such as IPX, NetBIOS or SNA.

For example, these protocols could make use of a TCP/IP network to connect to

other networks of similar protocol.

One further reason for the growth of TCP/IP is the popularity of the socket

programming interface, which is the programming interface between the TCP/IP

transport protocol layer and TCP/IP applications. A large number of applications

today have been written for the TCP/IP socket interface. The Request for

Comments (RFC) process, overseen by the Internet Architecture Board (IAB) and

the Internet Engineering Task Force (IETF), provides for the continual upgrading

and extension of the protocol suite.

1.1.2 The Open Systems Interconnection (OSI) Model

Around the time that DARPA was researching into an internetworking protocol

suite, which eventually led to TCP/IP and the Internet (see 1.1.1, “A Brief History

of the Internet and IP Technologies” on page 1), an alternative standard approach

was being led by the CCITT (Comité Consultatif International Telegraphique et

Telephonique, or Consultative Committee on International Telegraph and

Telephone), and the ISO (International Organization for Standardization). The

CCITT has since become the ITU-T (International Telecommunication Union -

Telecommunication).

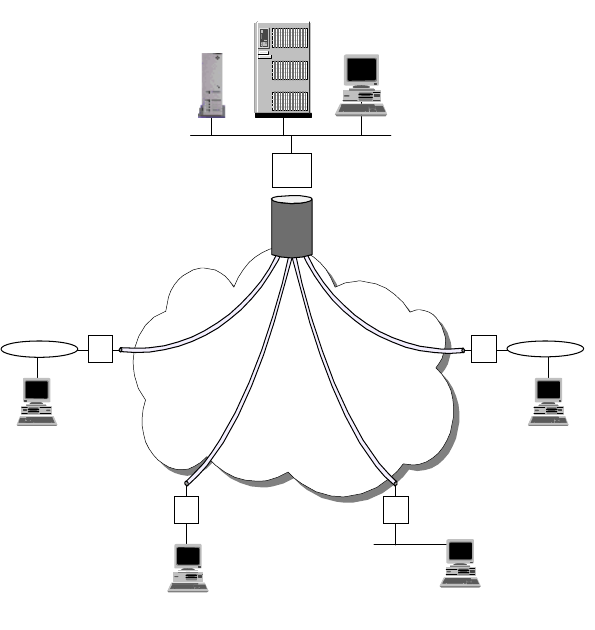

The resulting standard was the OSI (Open Systems Interconnection) Reference

Model (ISO 7498), which defined a seven-layer model of data communications,

as shown in Figure 1 on page 3. Each layer of the OSI Reference Model provides

a set of functions to the layer above and, in turn, relies on the functions provided

by the layer below. Although messages can only pass vertically through the stack

from layer to layer, from a logical point of view, each layer communicates directly

with its peer layer on other nodes.

Introduction 3

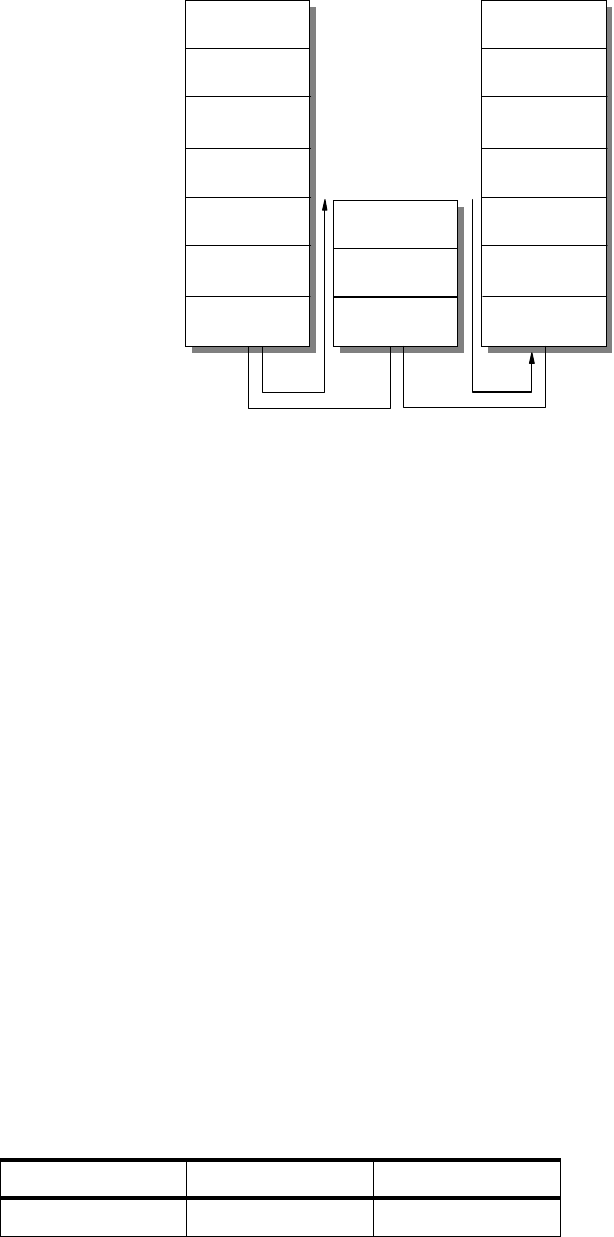

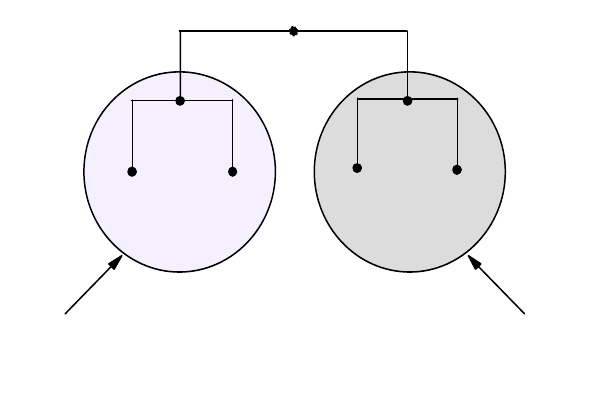

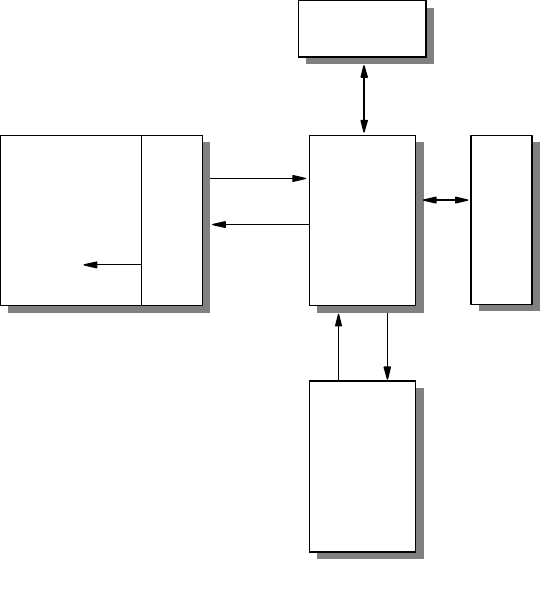

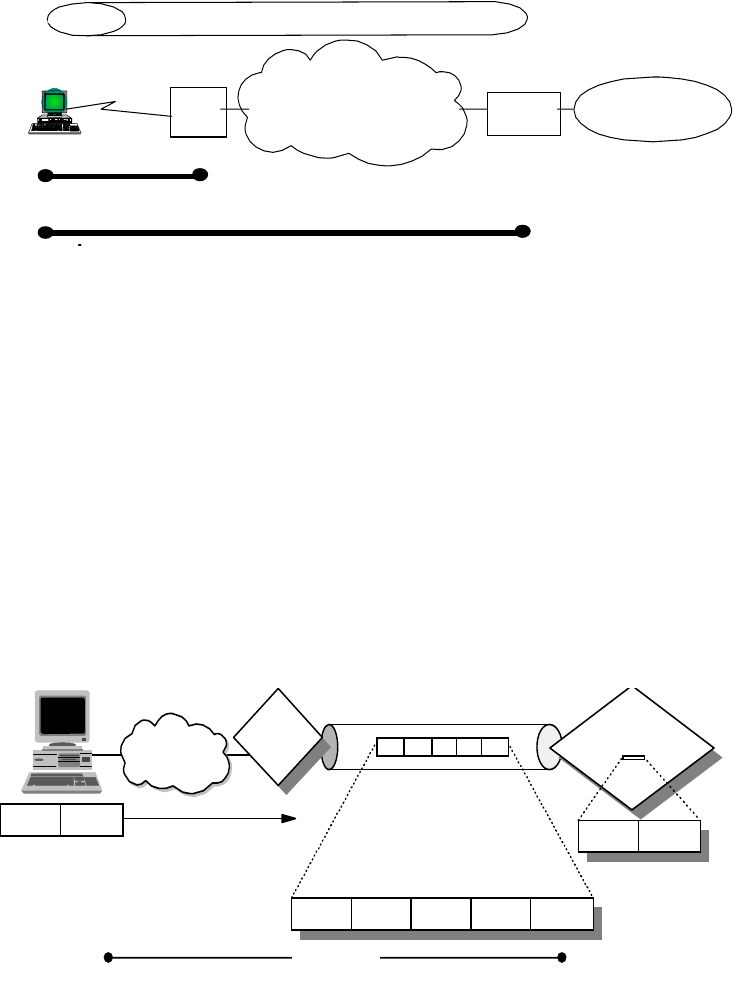

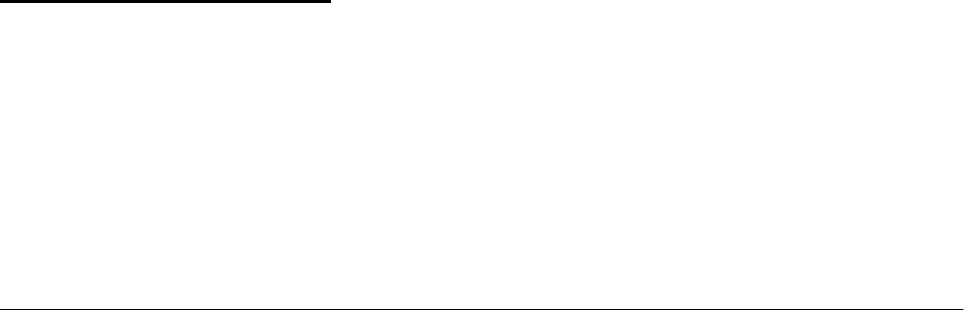

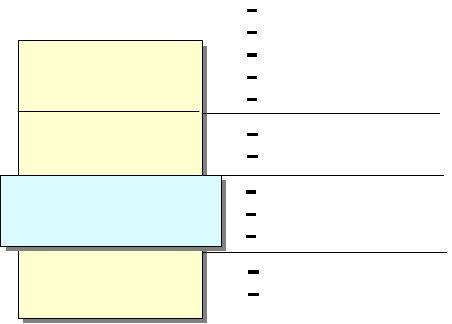

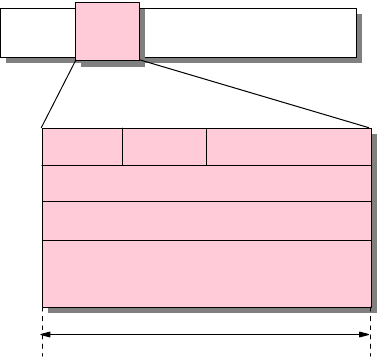

Figure 1. OSI Reference Stack

The seven layers are:

Application

The application layer gives the user access to all the lower OSI functions, and

its purpose is to support semantic exchanges between applications existing in

open systems. An example is the Web browser.

Presentation

The presentation layer is concerned with the representation of user or system

data. This includes necessary conversations (for example, a printer control

character), and code translation (for example, ASCII to EBCDIC).

Session

The session layer provides mechanisms for organizing and structuring

interaction between applications and/or devices.

Transport

The transport layer provides transparent and reliable end-to-end data transfer,

relying on lower layer functions for handling the peculiarities of the actual

transfer medium. TCP and UDP are examples of a Transport layer protocol.

Network

The network layer provides the means to establish connections between

networks. The standard also includes procedures for the operational control of

internetwork communications and for the routing of information through

multiple networks. The IP is an example of a Network layer protocol.

Data Link

The data link layer provides the functions and protocols to transfer data

between network entities and to detect (and possibly correct) errors that may

occur in the physical layer.

Application

Presentation

Session

Transport

Network

Data Link

Physical

Application

Presentation

Session

Transport

Network

Data Link

Physical

3376A\3376F1D5

4IP Network Design Guide

Physical

The physical layer is responsible for physically transmitting the data over the

communication link. It provides the mechanical, electrical, functional and

procedural standards to access the physical medium.

The layered approach was selected as a basis to provide flexibility and

open-ended capability through defined interfaces. The interfaces permit some

layers to be changed while leaving other layers unchanged. In principle, as long

as standard interfaces to the adjacent layers are adhered to, an implementation

can still work.

1.1.3 The TCP/IP Model

While the OSI protocols developed slowly, due mainly to their formal committee-

based engineering approach, the TCP/IP protocol suite rapidly evolved and

matured. With its public Request for Comments (RFC) policy of improving and

updating the protocol stack, it has established itself as the protocol of choice for

most data communication networks.

As in the OSI model and most other data communication protocols, TCP/IP

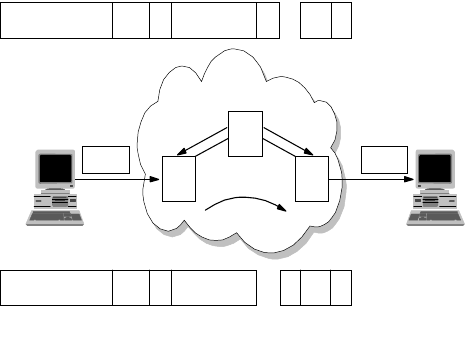

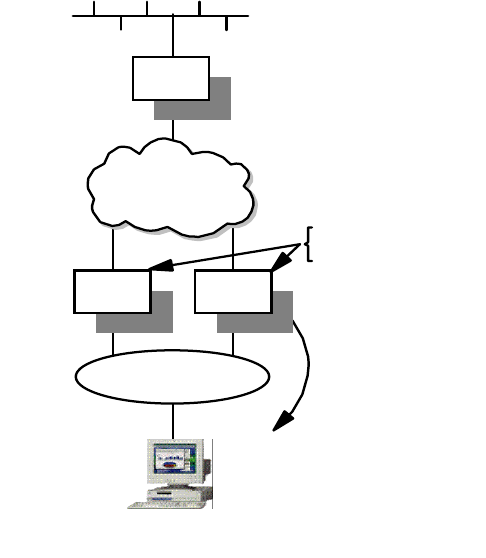

consists of a protocol stack, made up of four layers (see Figure 2 on page 4).

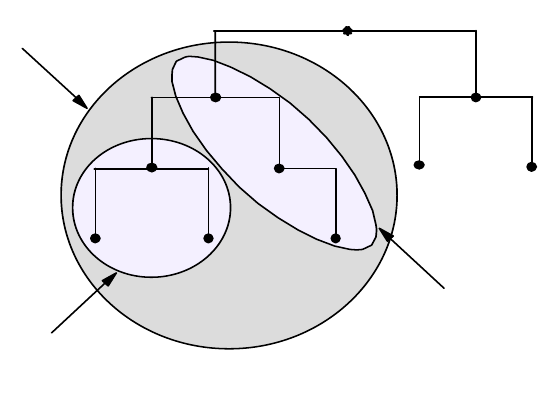

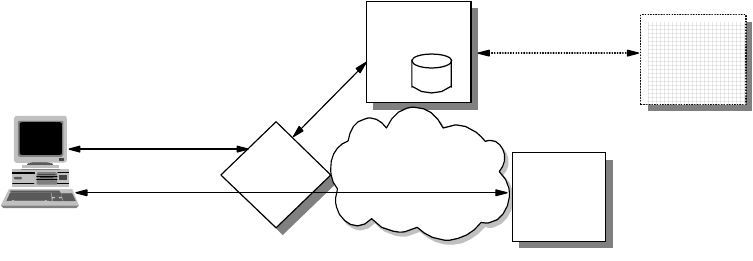

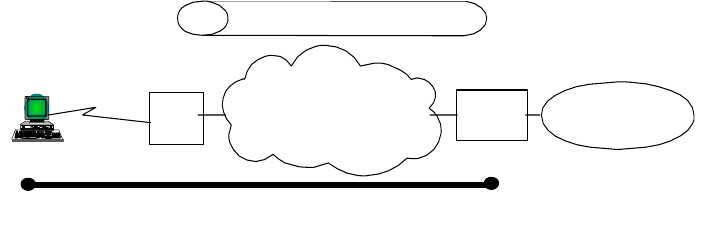

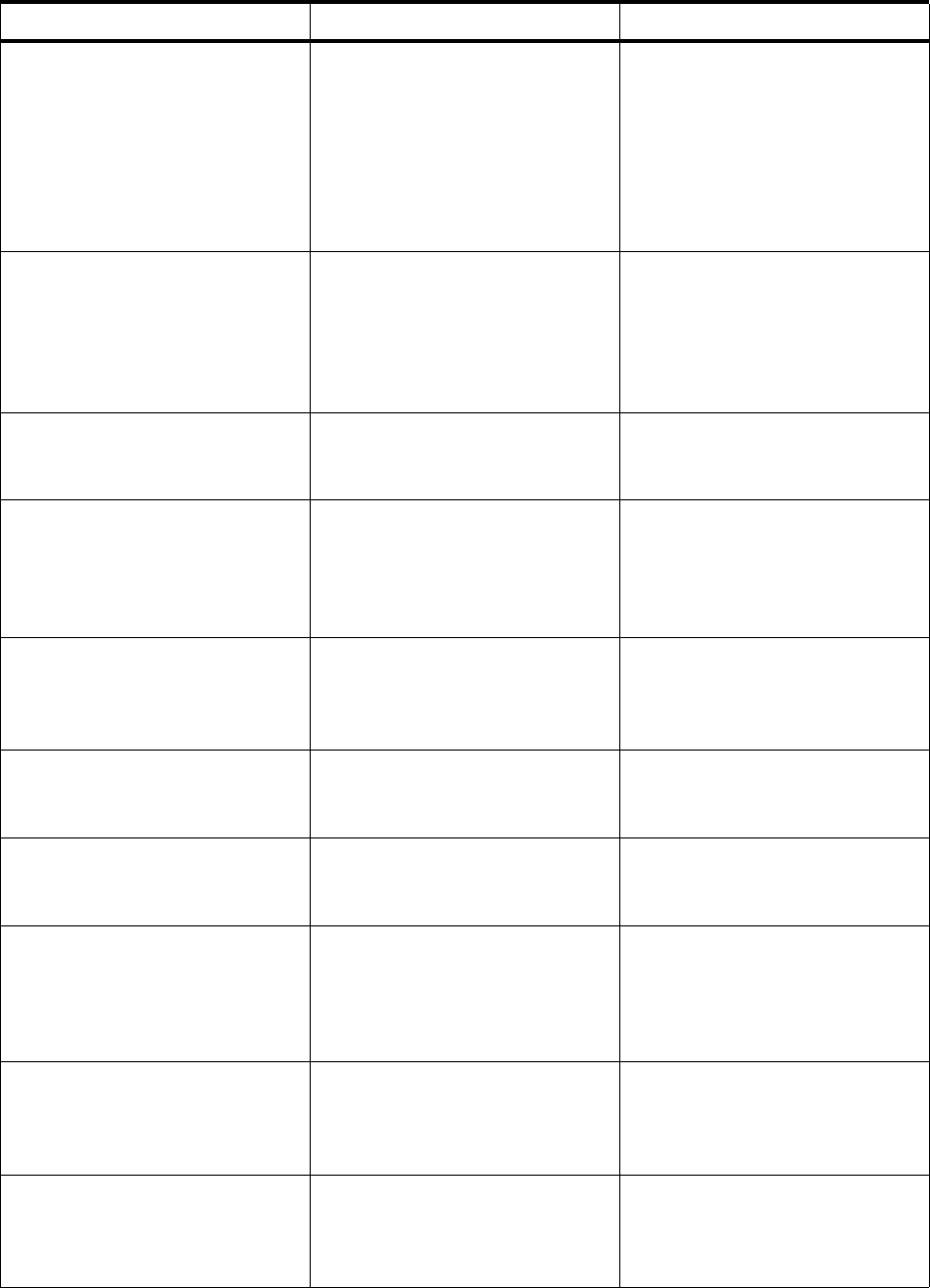

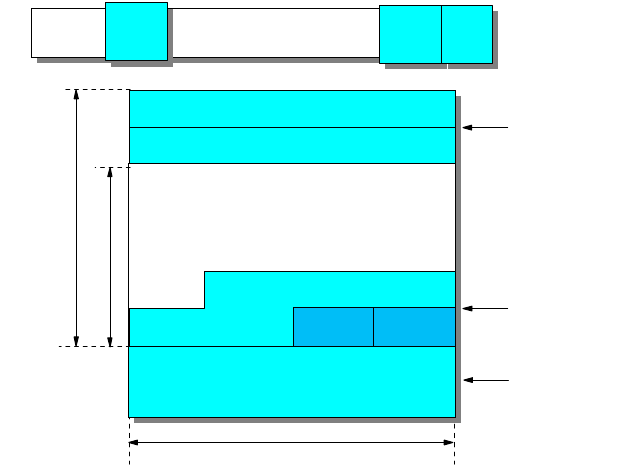

Figure 2. TCP/IP Stack

The layers of the TCP/IP protocol are:

Application Layer

The application layer is provided by the user’s program that uses TCP/IP for

communication. Examples of common applications that use TCP/IP are Telnet,

FTP, SMTP, and Gopher. The interfaces between the application and transport

layers are defined by port numbers and sockets.

Transport Layer

The transport layer provides the end-to-end data transfer. It is responsible for

providing a reliable exchange of information. The main transport layer protocol is

the Transmission Control Protocol (TCP). Another transport layer protocol is User

Datagram Protocol (UDP), which provides a connectionless service in

Applications

Transport

Internetwork

Network Interface

and

Hardware

Applications

TCP/UDP

ICMP

IP ARP/RARP

Network Interface

and Hardware

.......

.......

.......

.......

3376a\3376F1D2

Introduction 5

comparison to TCP, which provides a connection-oriented service. That means

that applications using UDP as the transport protocol have to provide their own

end-to-end flow control. Usually, UDP is used by applications that need a fast

transport mechanism.

Internetwork Layer

The internetwork layer, also called the internet layer or the network layer,

separates the physical network from the layers above it. The Internet Protocol (IP)

is the most important protocol in this layer. It is a connectionless protocol that

doesn't assume reliability from the lower layers. IP does not provide reliability,

flow control or error recovery. These functions must be provided at a higher level,

namely the transport layer if using TCP or the application layer if using UDP.

A message unit in an IP network is called an IP datagram. This is the basic unit of

information transmitted across TCP/IP networks. IP provides routing functions for

distributing these datagrams to the correct recipient for the protocol stack. Other

internetwork layer protocols are ICMP, IGMP, ARP and RARP.

Network Interface Layer

The network interface layer, also called the link layer or the data link layer, is the

interface to the actual network hardware. This layer does not guarantee reliable

delivery; that is left to the higher layers, and may be packet or stream oriented.

TCP/IP does not specify any particular protocol for this layer. It can use almost

any network interface available making it a flexible network while providing

backwards compatibility with legacy infrastructure. Examples of supported

network interface protocols are IEEE 802.2, X.25 (which is reliable in itself), ATM,

FDDI and even SNA.

1.1.4 The Need for Design in IP Networks

If you do not take time to plan your network, the ease of interconnection through

the use of TCP/IP can lead to problems. The purpose of this book is to point out

some of the problems and highlight the types of decisions you will need to make

as you consider implementing a TCP/IP solution.

For example, lack of effective planning of network addresses may result in

serious limitations in the number of hosts you are able to connect to your network.

Lack of centralized coordination may lead to duplicate resource names and

addresses, which may prevent you from being able to interconnect isolated

networks. Address mismatches may prevent you from connecting to the Internet,

and other possible problems may include the inability to translate resource names

to resource addresses because connections have not been made between name

servers.

Some problems arising from a badly designed or an unplanned network are trivial

to correct. Some, however, require significant time and effort to correct. Imagine

manually configuring every host on a 3000-host network because the addressing

scheme chosen no longer fits a business’ needs!

When faced with the task of either designing a new TCP/IP network or allowing

existing networks to interconnect, there are several important design issues that

will need to be resolved. For example, how to allocate addresses to network

resources, how to alter existing addresses, whether to use static or dynamic

routing, how to configure your name servers and how to protect your network are

6IP Network Design Guide

all questions that need to be answered. At the same time the issues of reliability,

availability and backup will need to be considered, along with how you will

manage and administer your network.

The following chapters will discuss these and other concerns, and provide the

information you need to make your decisions. Where possible we will provide

general guidelines for IP network design rather than discussing product-specific

or platform-specific considerations. This is because the product-specific

documentation in most cases already exists and provides the necessary details

for configuration and implementation. We will not attempt to discuss TCP/IP

applications in any depth due to the information also being available to you in

other documents.

1.1.5 Designing an IP Network

Due to the simplicity and flexibility of IP, a network can be "hacked" together in an

unordered fashion. It is common for a network to be connected in this manner,

and this may work well for small networks. The problem arises when changes are

required and documentation is not found. Worst of all, if the network

design/implementation teams leave the organization, the replacements are left

with the daunting task of finding out what the network does, how it fits together,

and what goes where!

An IP network that has not been designed in a systematic fashion will invariably

run into problems from the beginning of the implementation stage. When you are

upgrading an existing network, there are usually legacy networks that need to be

connected. Introducing of new technology without studying the limitations of the

current network may lead to unforeseen problems. You may end up trying to solve

a problem that was created unnecessarily. For example, the introduction of an

Ethernet network in a token-ring environment has to be carefully studied.

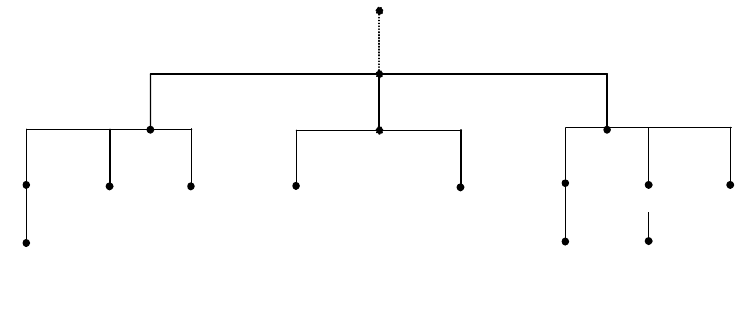

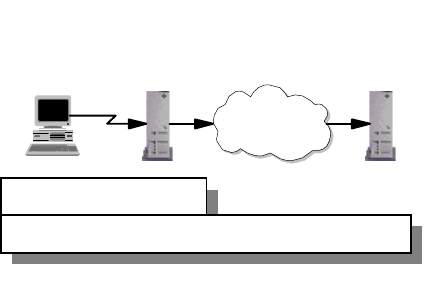

The design of the network must take place before any implementation takes

place. The design of the IP network must also be constantly reviewed as

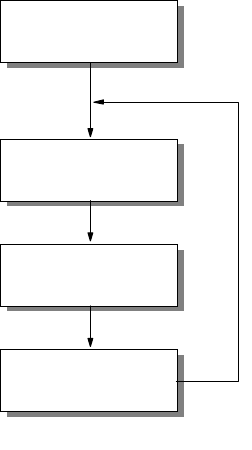

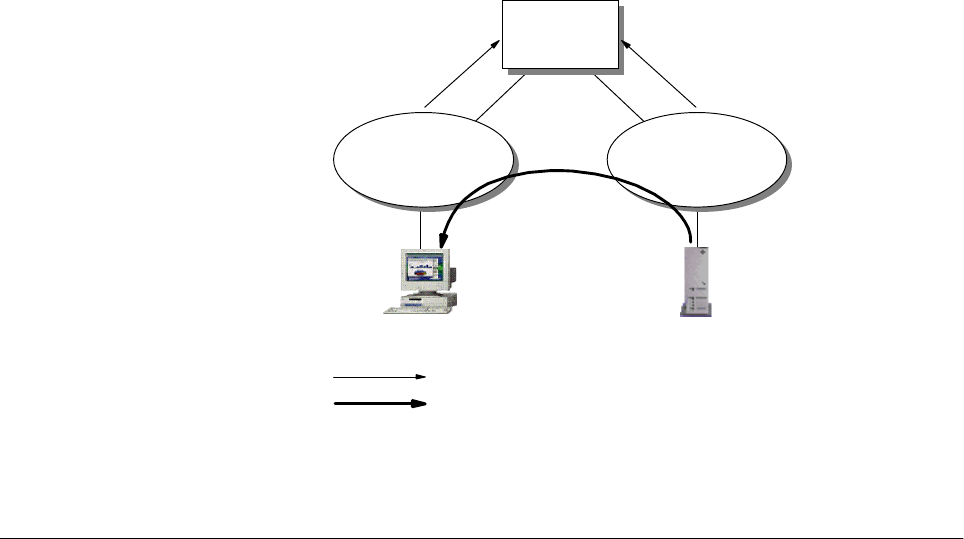

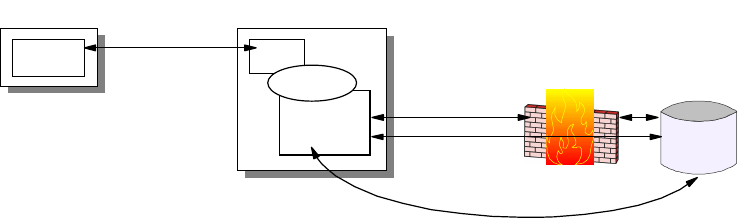

requirements change over time, as illustrated in Figure 3 on page 7.

Introduction 7

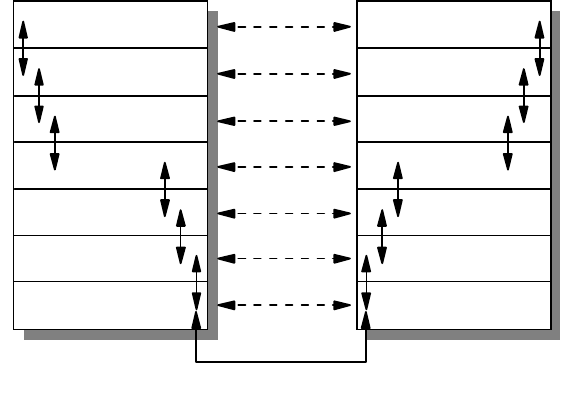

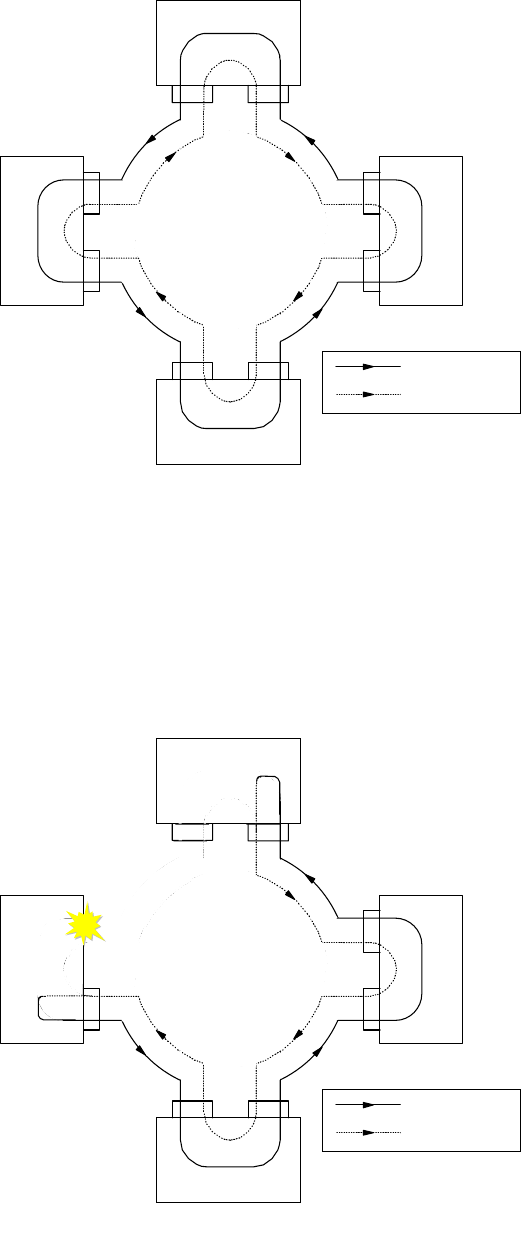

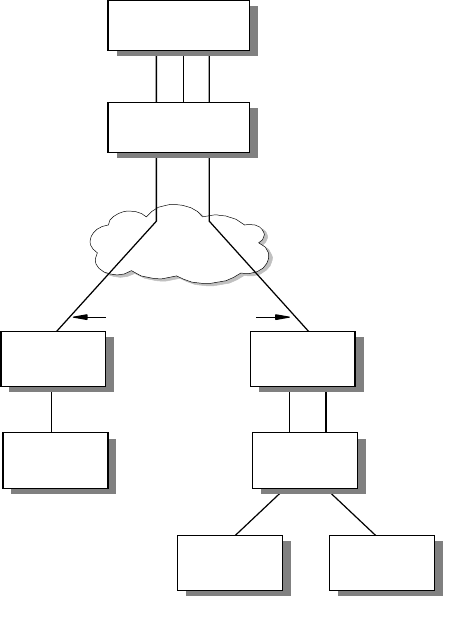

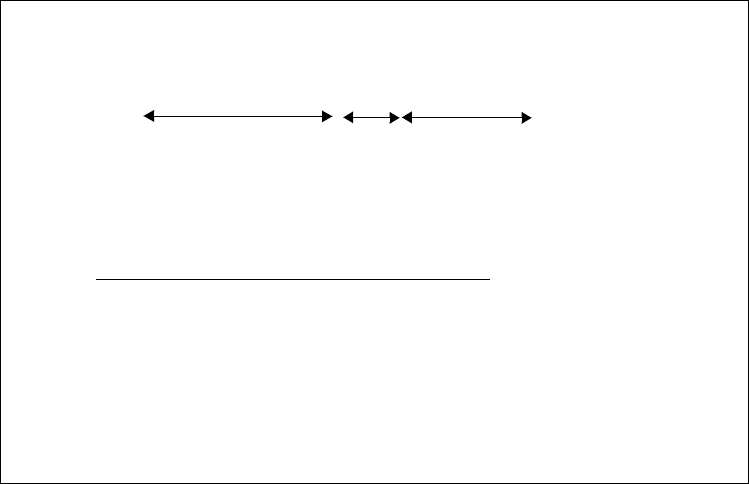

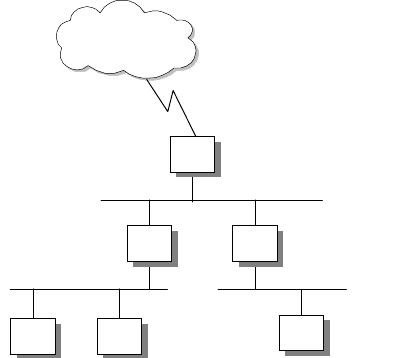

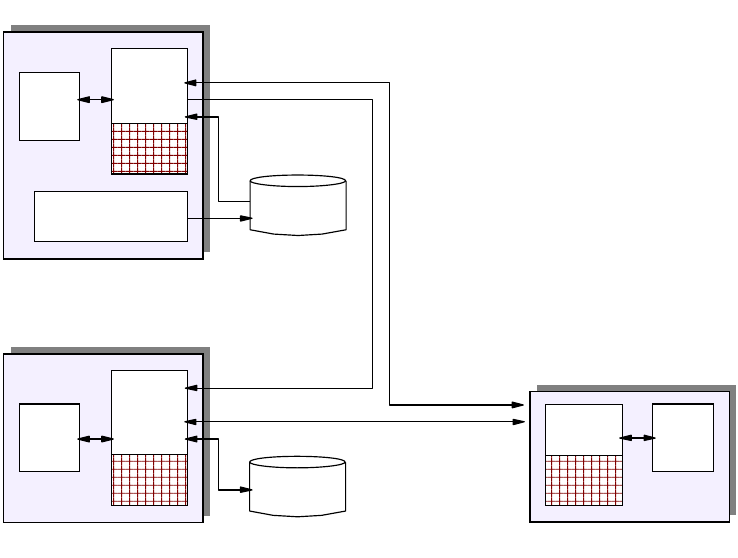

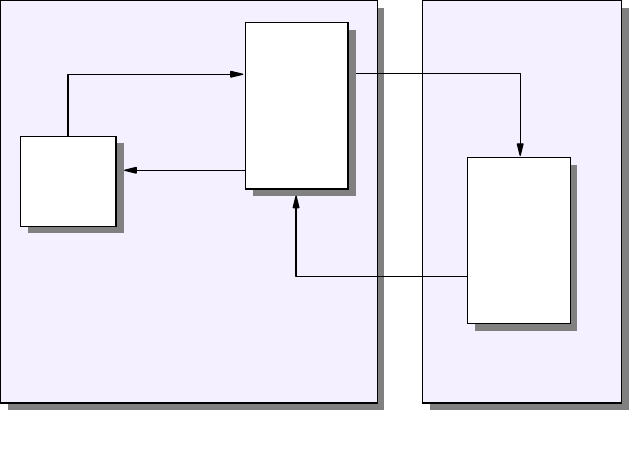

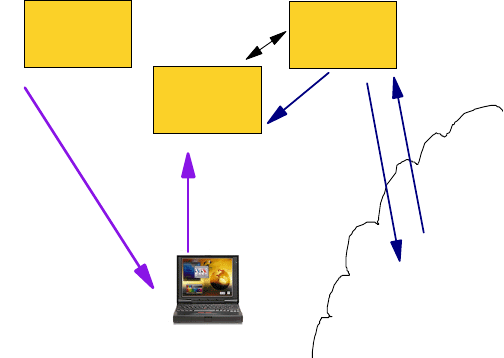

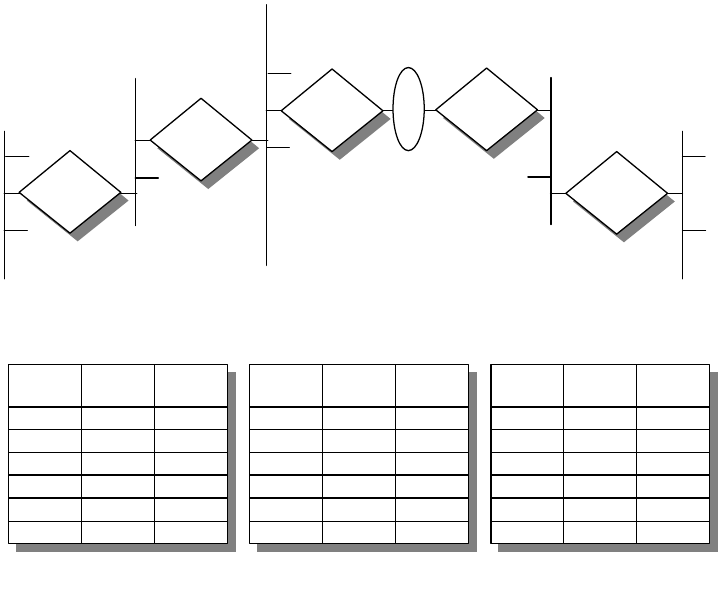

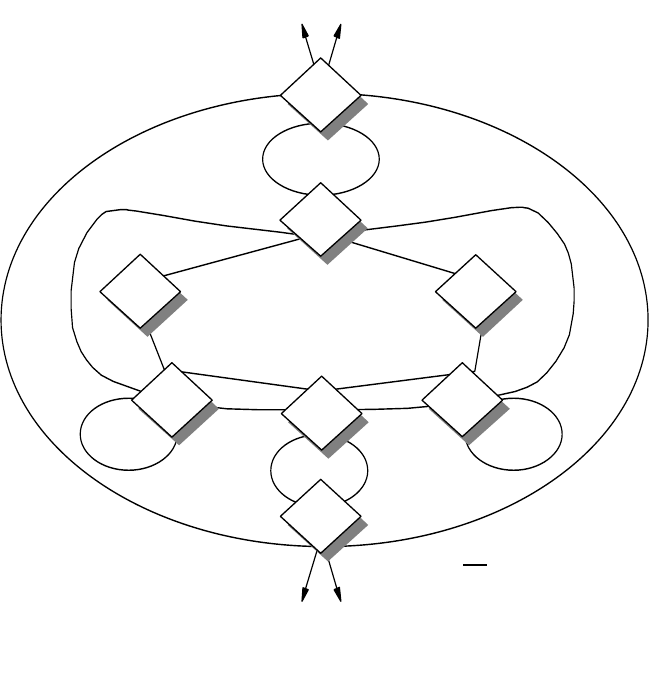

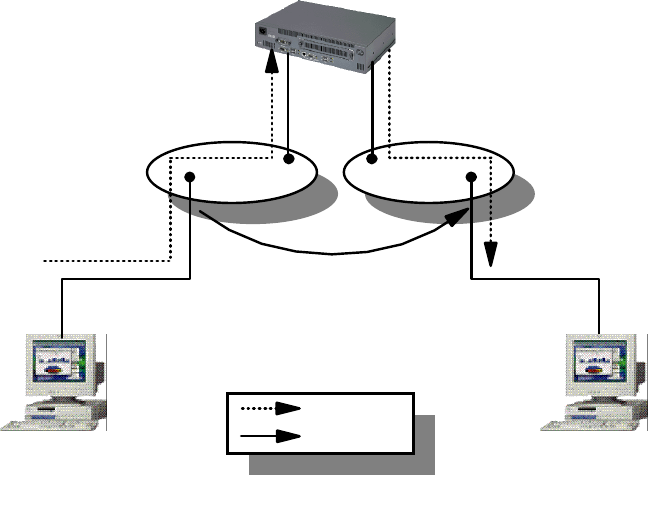

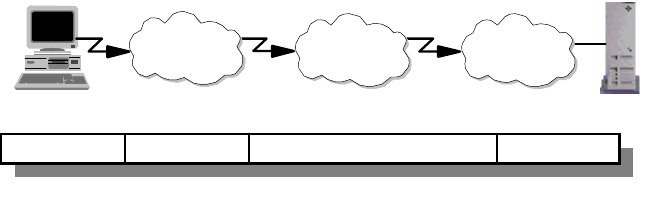

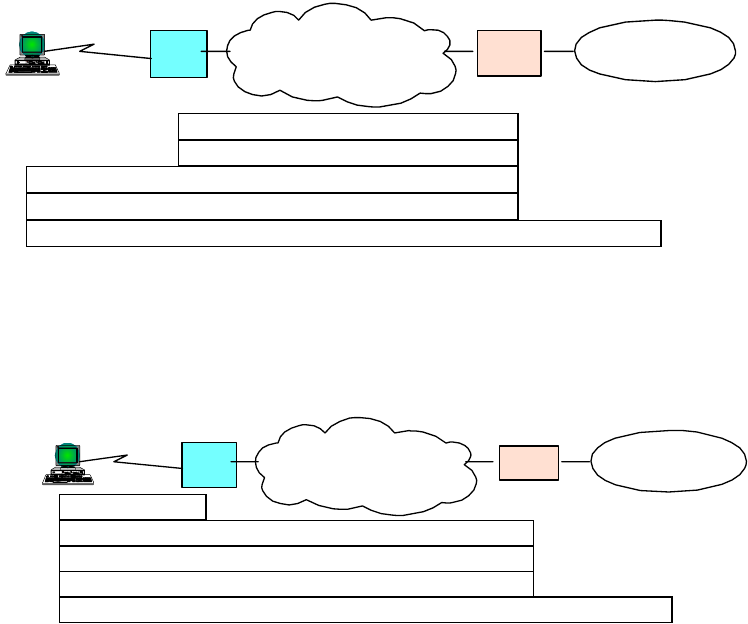

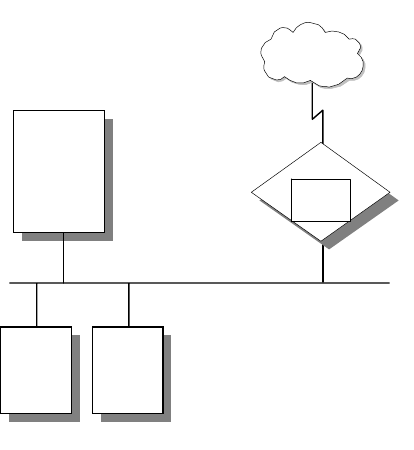

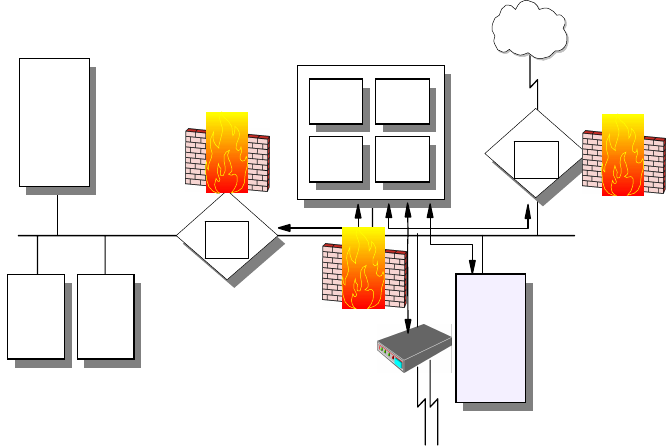

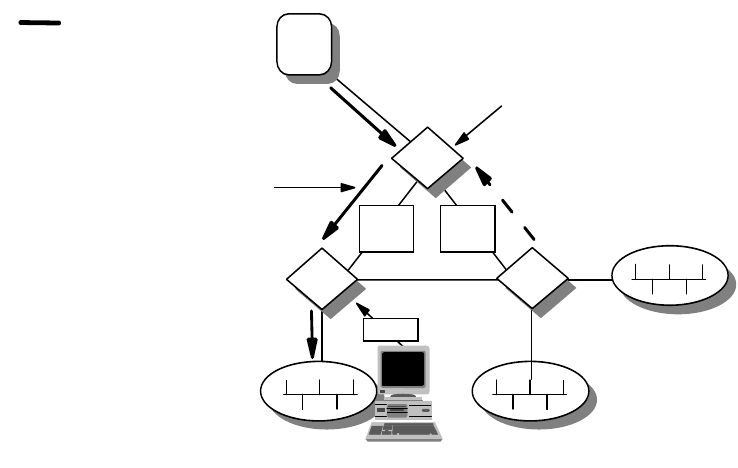

Figure 3. IP Network Design Implementation and Change

A good IP network design also includes detailed documentation of the network for

future reference. A well designed IP network should be easy to implement, with

few surprises. It is always good to remember the

KISS

principle:

Keep It Simple,

Stupid!

1.1.5.1 The Design Methodology

The design methodology recommended for use in the design of an IP network is a

top-down design approach.

This technique of design loosely follows the TCP/IP stack. As seen in Figure 2 on

page 4, at the top of the stack lies the application layer. This is the first layer

considered when designing the IP network. The next two layers are the transport

and network layers with the final layer being the data link layer.

The design of an application is dictated by business requirements. The rules of

the business, the process flow, the security requirements and the expected

results all get translated into the application’s specification. These requirements

not only affect the design of the application but their influence permeates all the

way down to the lower layers.

Once the application layer requirements have been identified, the requirements

for the lower layers follow. For example, if the application layer has a program that

demands a guaranteed two-second response time for any network transaction,

the IP network design will need to take this into consideration and maybe place

performance optimization as high priority. The link layer will need to be designed

in such a manner that this requirement is met. Using a flat network model for the

link layer with a few hundred Windows-based PCs may not be an ideal design in

this case.

Once the design of the IP network has been completed with regard to the

application layer, the implementation of the network is carried out.

Initial Design

Deployment

Commissioning

Design Change

2580C\CH3F21

8IP Network Design Guide

The design for the network infrastructure plays an important part, as it ultimately

affects the overall design. A good example of this is the modularity and scalability

of the overall IP network. The following are some basic considerations in

designing an IP network.

1.1.5.2 Overall Design Considerations

Although much could be said about design considerations that is beyond the

scope of this book, there are a few major points that you need to know:

• Scalability

A well designed network should be scalable, so as to grow with increasing

requirement. Introduction of new hosts, servers, or networks to the network

should not require a complete redesign of the network topology. The

topology chosen should be able to accommodate expansion due to

business requirements.

• Open Standards

The entire design and the components that build the network should be

based on open standards. Open standards imply flexibility, as there may be

a need to interconnect different devices from different vendors. Proprietary

features may be suitable to meet a short term requirement but in the long

run, they will limit choices as it will be difficult to find a common technology.

• Availability/Reliability

Business requirements assuredly demand a level of availability and

reliability of the network. A stock trading system based on a network that

guarantees transaction response times of three seconds is meaningless if

the network is down three out of seven days a week!

The mean time between failures (MTBF) of the components must be

considered when designing the network, as must the mean time to repair

(MTTR). Designing logical redundancy in the network is as important as

physical redundancy.

It is too late and costly to consider redundancy and reliability of a network

when you are already halfway through the implementation stage.

• Modularity

An important concept to adopt is the modular design approach in building a

network. Modularity divides a complex system into smaller, manageable

ones and makes implementation much easier to handle. Modularity also

ensures that a failure at a certain part of the network can be isolated so

that it will not bring down the entire network.

The expendability of a network is improved by implementing a modular

design. For example, adding a new network segment or a new application

to the network will not require re-addressing all the hosts on the network if

the network has been implemented in a modular design.

• Security

The security of an organization’s network is an important aspect in a

design, especially when the network is going to interface with the Internet.

Considering security risks and taking care of them in the design stage of

the IP network is essential for complete certitude in the network.

Considering security at a later stage leaves the network open to attack until

Introduction 9

all security holes are closed, a reactive rather than proactive approach that

sometimes is very costly. Although new security holes may be found as the

hackers get smarter, the basic known security problems can easily be

incorporated into the design stage.

• Network Management

IP network management should not be an afterthought of building a

network. Network management is important because it provides a way to

monitor the health of the network, to ascertain operating conditions, to

isolate faults and configure devices to effect changes.

Implementing a management framework should be integrated into the

design of the network from the beginning. Designing and implementing an

IP network and then trying to "fit" a management framework to the network

may cause unneccessary issues. A little proactivity in the design stage can

lead to a much easier implementation of management resources.

• Performance

There are two types of performance measures that should be considered

for the network. One is the throughput requirement and the other is the

response time. Throughput is how much data can be sent in the shortest

time possible, while response time is how long a user must wait before a

result is returned from the system.

Both of these factors need to be considered when designing the network. It

is not acceptable to design a network only to fail to meet the organization’s

requirements in the response times for the network. The scalability of the

network with respect to the performance requirements must also be

considered, as mentioned above.

• Economics

An IP network design that meets all of the requirements of the organization

but is 200% of the budget, may need to be reviewed.

Balancing cost and meeting requirements are perhaps the most difficult

aspects of a good network design. The essence is in the word compromise.

One may need to trade off some fancy features to meet the cost, while still

meeting the basic requirements.

1.1.5.3 Network Design Steps

Below is a generic rule-of-thumb approach to IP network design. It presents a

structured approach to analyzing and developing a network design to suit the

needs of an organization.

10 IP Network Design Guide

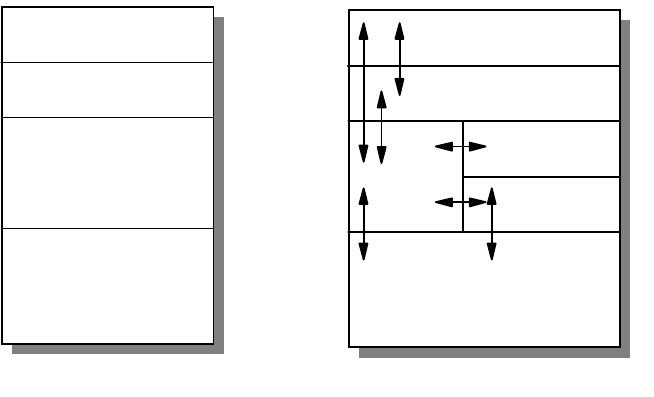

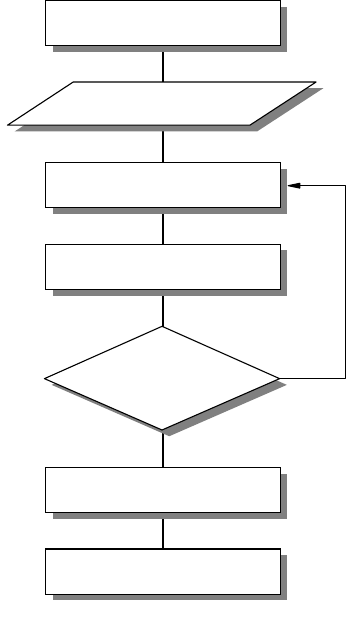

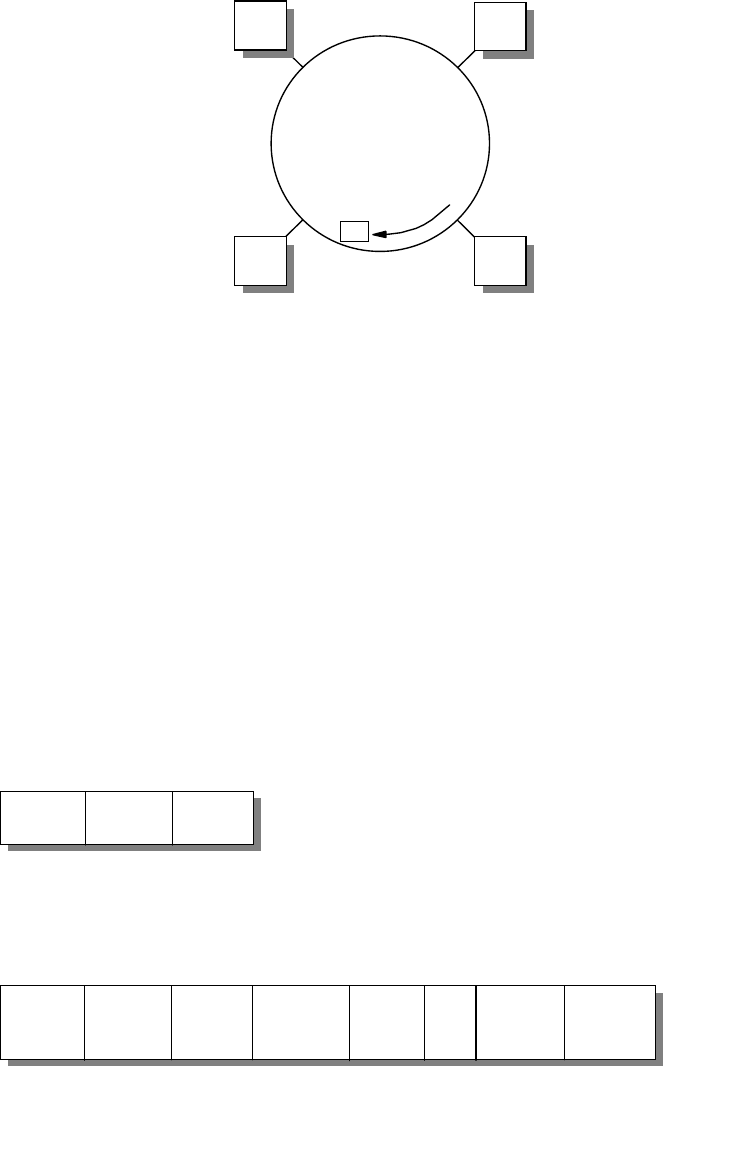

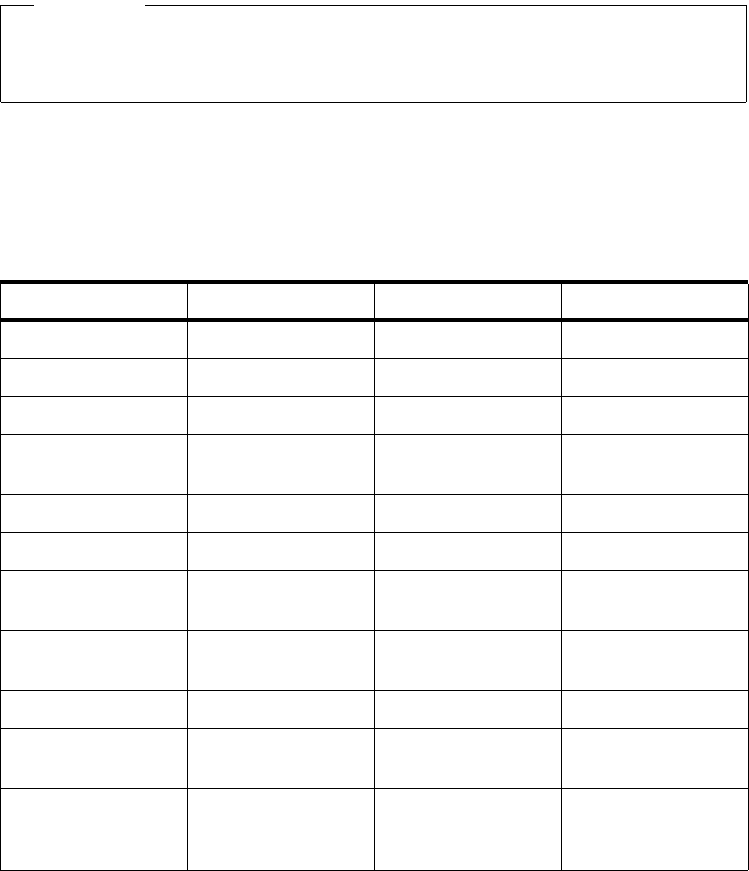

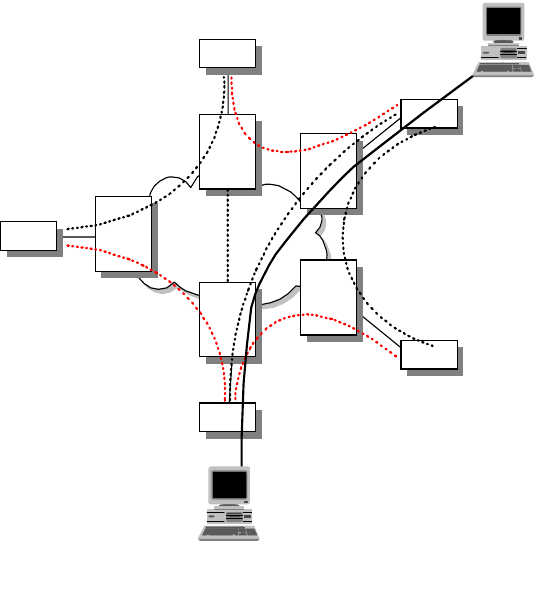

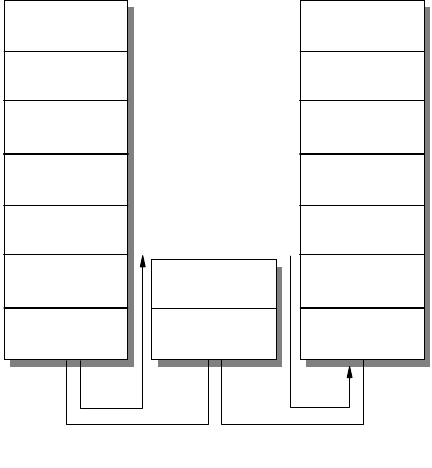

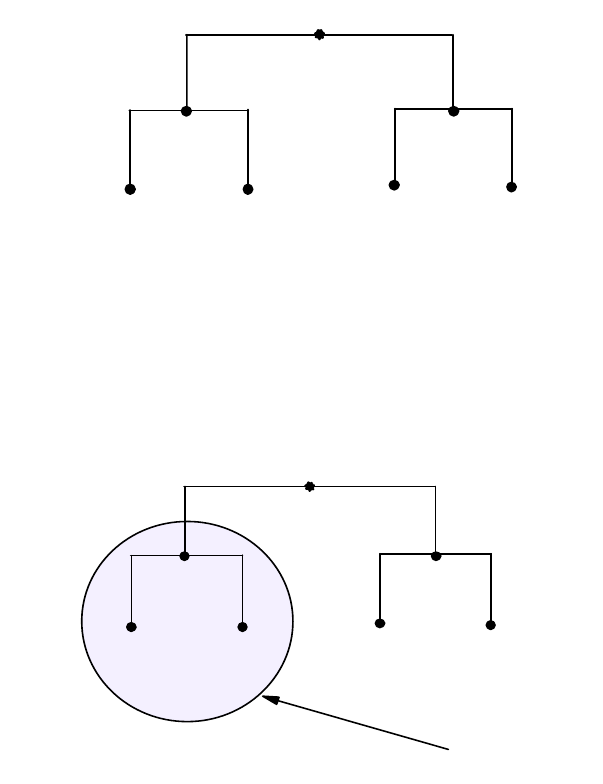

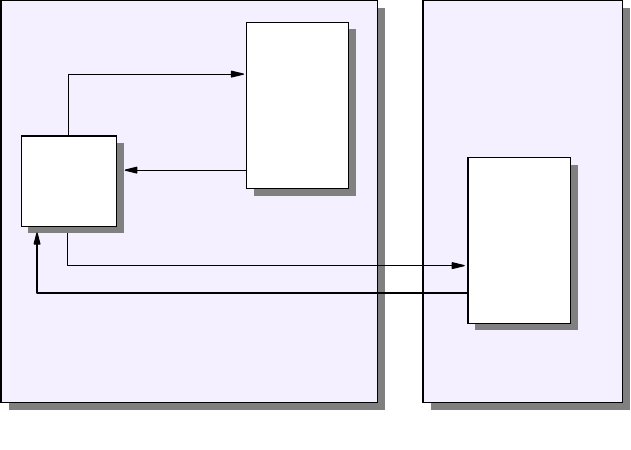

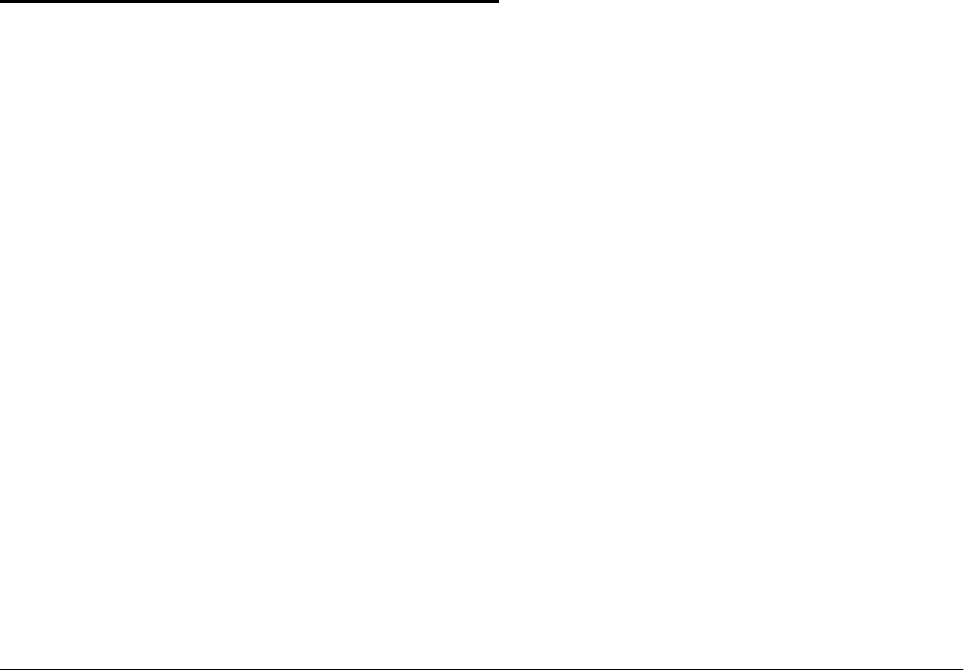

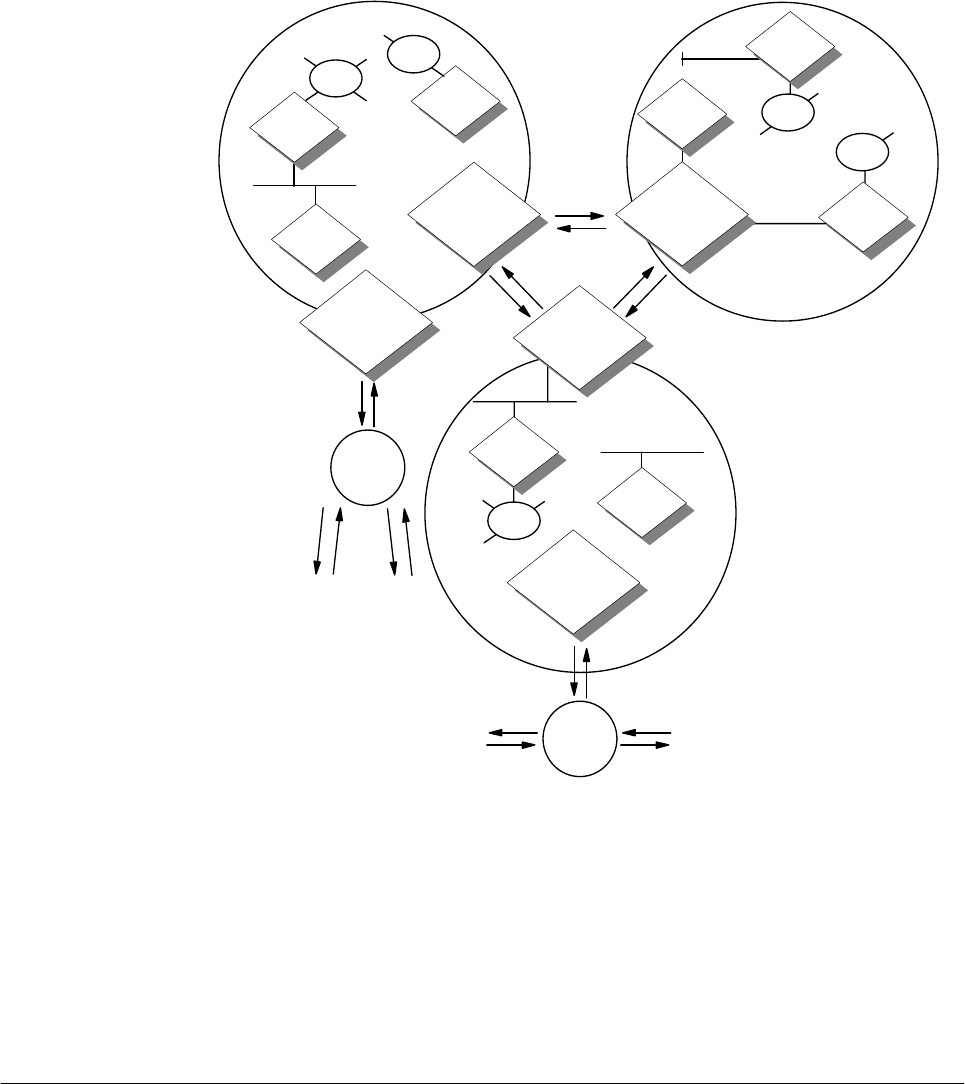

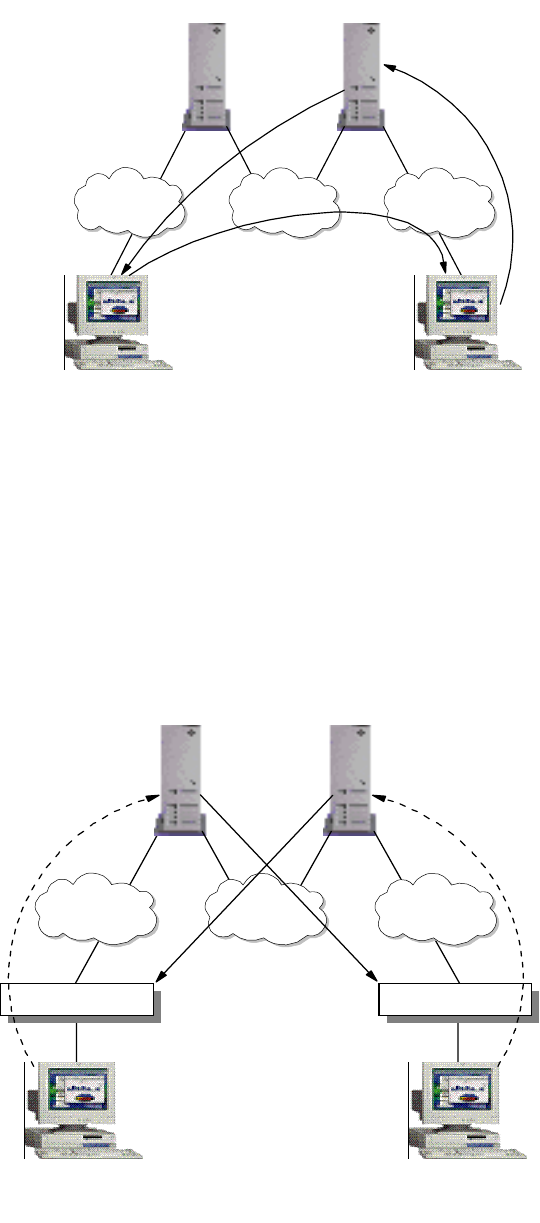

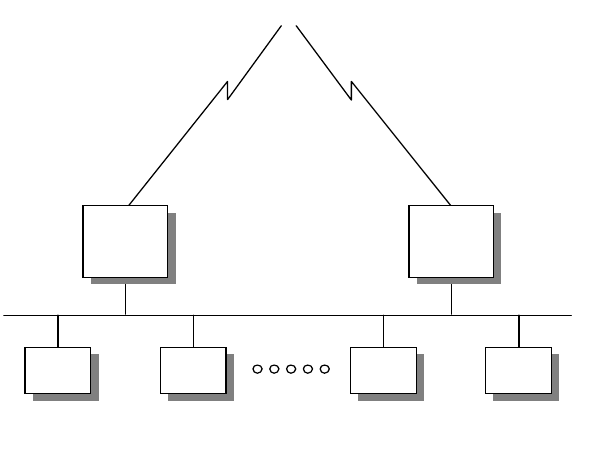

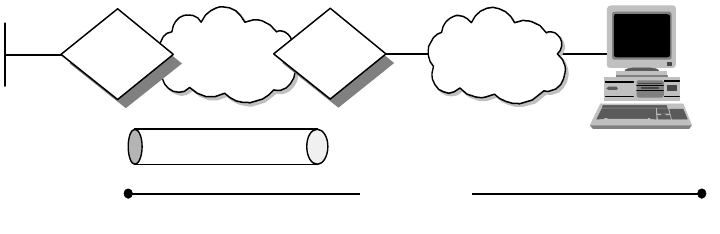

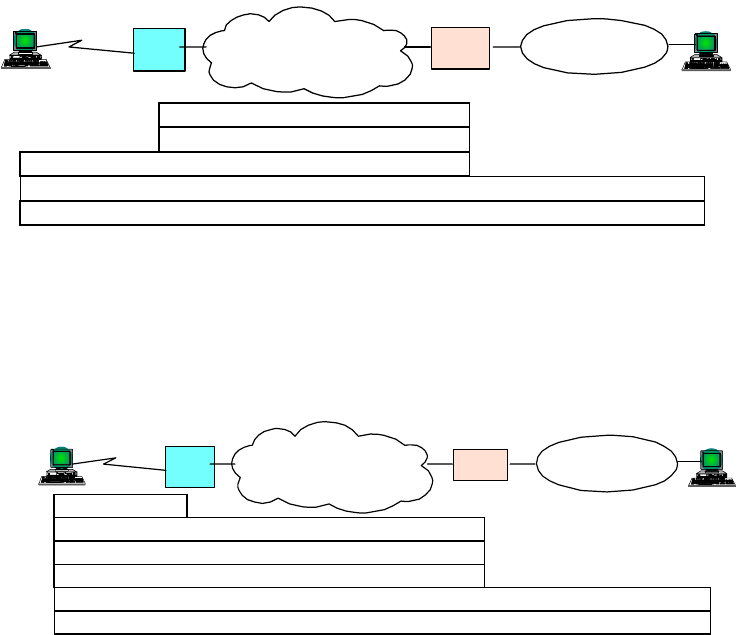

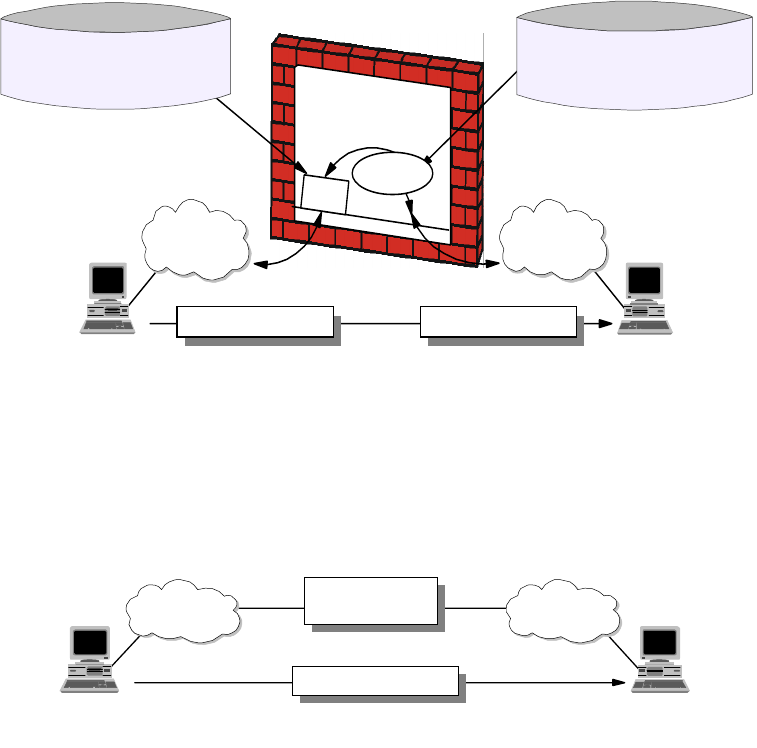

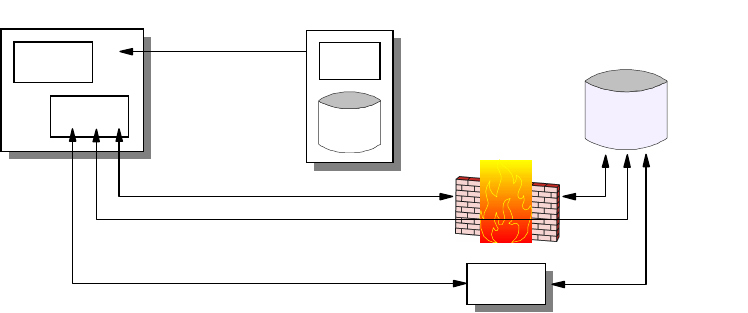

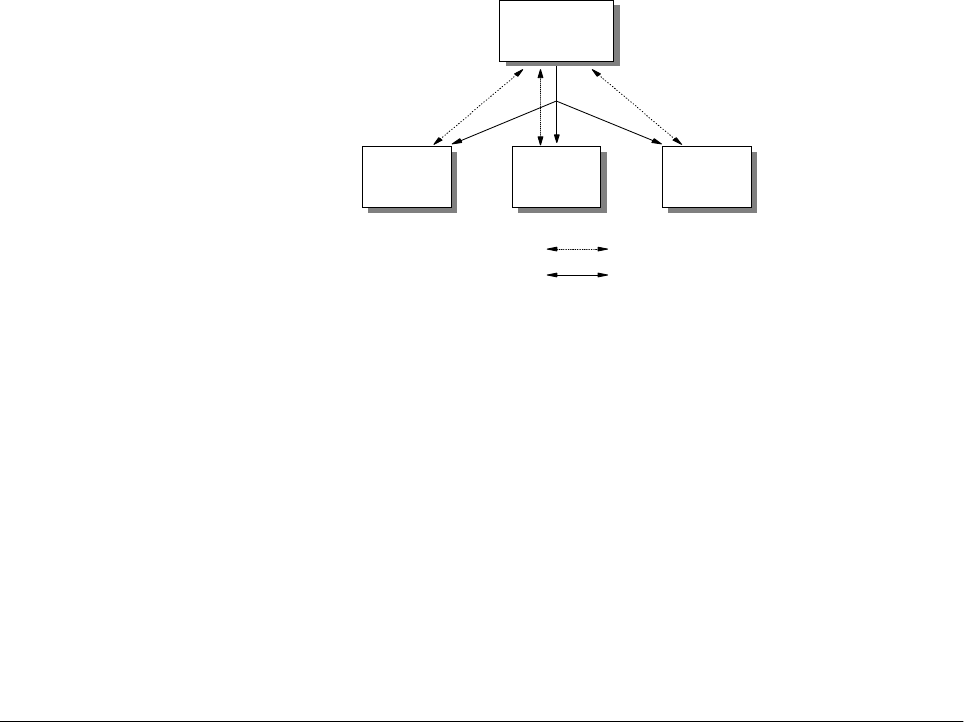

Figure 4. Network Design Steps

Network Objectives

What are the objectives of this IP network? What are the business requirements

that need to be satisfied? This step of the design process needs research and

can be time consuming. The following, among other things, should be considered:

• Who are the users of the IP network and what are their requirements?

• What applications must be supported?

• Does the IP network replace an existing communications system?

• What migration steps must be considered?

• What are the requirements as defined in 1.1.5.2, “Overall Design

Considerations” on page 8?

• Who is responsible for network management?

• Should the network be divided into more manageable segments?

• What is the life expectancy of the network?

• What is the budget?

Collecting Design Information

The information that is required for building the network depends on each

individual implementation. However, the main types of information required can

be deduced from Part 1.1.5.2, “Overall Design Considerations” on page 8.

Create Design Proposal

Network Objectives

Collect Design Information

Propose Configuration

Have all designs

been considered?

Make Selection

Move to Implementation

Y

N

2580C\CH3F24

Introduction 11

It is important to collect this information and spend time analyzing it to develop a

thorough understanding of the environment and limitations imposed upon the

design of the new IP network.

Create a Proposal or Specification

Upon analysis of the collected information and the objectives of the network, a

design proposal can be devised and later optimized. The design considerations

can be met with one goal overriding others. So the network can be:

• Optimized for performance

• Optimized for resilience

• Optimized for security

Once the design priorities have been identified the design can be created and

documented.

Review

The final stage in the design process is to review the design before it is

implemented. The design can be modified at this stage easily, before any

investment is made into infrastructure or development work. With this completed,

the implementation stage can be initiated.

1.2 Application Considerations

As presented in chapter one, the TCP/IP model’s highest layer is the application

layer. As the elements that populate this layer are defined by the business

requirements of the overall system, these components must be considered the

most important in the initial design considerations with a top-down design

methodology.

The type of applications that the network needs to support and the types of

network resources these applications require, must be taken into consideration

when designing the IP network. There are a number of these issues that must be

considered for the network design, some that are common to all applications,

while others pertain to a subset of applications. These issues will be defined and

elaborated.

Remember, building a complex ATM network to send plain text in a small

workgroup of 10 users is a waste of time and resources, unless you get them for

free!

1.2.1 Bandwidth Requirements

Different applications require varying amounts of network bandwidth. A simple

SMTP e-mail application does not have the same bandwidth requirement as a

Voice over IP application. Voice and data compression have not reached that

level yet.

It is obvious that the applications your network will need to support determine the

type of network you will finally design. It is not a good idea to design a network

without considering what applications you currently require, and what

applications your business needs will require your network to support in the

future.

12 IP Network Design Guide

1.2.2 Performance Requirements

The performance requirements of the users of the applications must be

considered. A user of the network may be willing to wait for a slow response from

an HTTP or FTP application, but they will not accept delays in a Voice over IP

application - it’s hard to understand what someone is saying when it’s all broken

up.

The delay in the delivery of network traffic also needs to be considered. Long

delays will not be acceptable to applications that stream data, such as video over

IP applications.

The accuracy with which the network is able to provide data to the application is

also relevant to the network design. Differing infrastructure designs provide

differing levels of accuracy from the network.

1.2.3 Protocols Required

The TCP/IP application layer supports an ever increasing number of protocols.

The basic choice in protocol for applications is whether or not the application will

use TCP or UDP. TCP delivers a reliable connection-oriented service. UDP

delivers faster network response by eliminating the overhead of the TCP header;

however, it loses TCP’s reliability, flow control and error recovery features.

It is clear that it depends on the application’s service focus as to which protocol it

will use. An FTP application, for example, will not use UDP. FTP uses TCP to

provide reliable end-to-end connections. The extra speed provided by using UDP

does not outweigh the reliability offered by TCP.

The Trivial File Transfer Protocol (TFTP), however, although similar to FTP, is

based on a UDP transport layer. As TFTP transactions are generally small in size

and very simple, the reliability of the TCP protocol is outweighed by the added

speed provided by UDP. Then why use FTP? Although TFTP is more efficient

than FTP over a local network, it is not good for transfers across the Internet as

its speed is rendered ineffective due to its lack of reliability. Unlike FTP

applications TFTP applications are also insecure.

1.2.4 Quality of Service/Type of Service (QoS/ToS)

Quality of Service (QoS) and Type of Service (ToS) arise simply for one reason:

some users’ data is more "important" then others. And there is a need to provide

these users with "premium" service, just like a VIP queue at the airport.

The requirement for QoS and ToS that gets incorporated into an application also

has implications for the network design. The connecting devices, the routers and

switches, have to be able to ensure "premium" delivery of information so as to

support the requirement of the application.

1.2.4.1 Real-Time Applications

Some applications, such as a Voice over IP or an ordering system, need to be

real time. The need for real-time applications necessitates a network that can

guarantee a level of service.

A real-time application will need to implement its own flow control and error

checking if it is to use UDP as a transport protocol. The requirements of real-time

Introduction 13

applications will also influence the type of network infrastructure implemented. An

ATM network can inherently fulfill the requirements, however, a shared Ethernet

network will not fulfill the requirement.

1.2.5 Sensitivity to Packet Loss and Delay

An application’s sensitivity to packet loss and delay can have dramatic effects on

the user. The network must provide reliable packet delivery for these applications.

For example, a real-time application, with little buffering, does not tolerate packet

delivery delays, let alone packet loss! Voice over IP is one example of such an

application, as opposed to an application such as Web browsing.

1.2.6 Multicast

Multicasting has been proven to be a good way of saving network bandwidth.

That is true, if it has been implemented properly and did not break the network in

the first place.

Getting multicasting to work involves getting all the connecting devices, such as

routers and switches, the applications, the clients’ operating systems, and the

servers to work hand in hand. Multicasting will not work if any of these

subsystems cannot meet the requirement, or if they have severe limitations.

1.2.7 Proxy-Enabled

The ability of an application protocol to be proxyed has implications on the

bandwidth requirements and the security of the network.

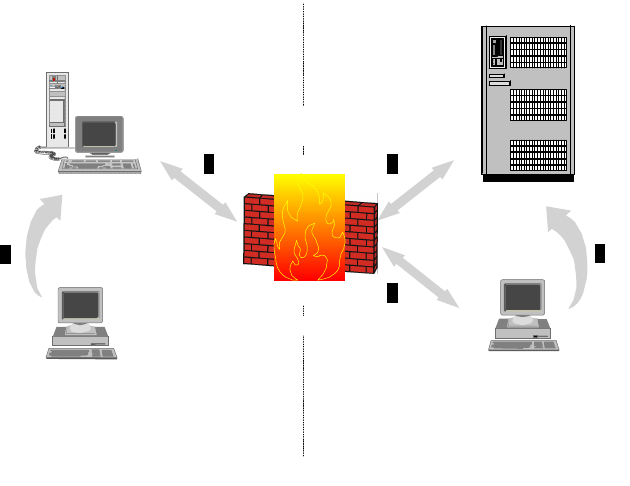

An HTTP application will be easily manageable when a firewall is installed for

security, as a proxy service can be placed outside the firewall in a demilitarized

zone to serve HTTP traffic through the firewall to the application.

An application based upon the TELNET protocol will not have such an easy time

as the HTTP application. The TELNET protocol does not support proxying of its

traffic. Thus, a firewall must remain open on this port, the application must use a

SOCKS server or the application cannot communicate through the firewall. You

either have a nonworking application, an added server or a security hole.

1.2.8 Directory Needs

Various applications require directory services with the IP network. Directory

services include DNS, NIS, LDAP, X.500 and DCE, among others. The choice of

Directory services depends on the application support for these services. An

application based upon the ITU X.500 standard will not respond well to a network

with only DNS servers.

Some applications, such as those based upon the PING and TFTP protocols, do

not require directory services to function, although the difficulty in their use would

be greatly increased. Other applications require directory services implicitly, such

as e-mail applications based on the SMTP protocol.

14 IP Network Design Guide

1.2.9 Distributed Applications

Distributed applications will require a certain level of services from the IP

network. These services must be catered for by the network, so they must be

considered in the network design.

Take Distributed Computing Environment (DCE) as an example. It provides a

platform for the construction and use of distributed applications that relies on

services such as remote procedure call (RPC), the Cell Directory Service (CDS),

Global Directory Service (GDS), the Security Service, DCE Threads, Distributed

Time Service (DTS), and Distributed File Service (DFS). These services have to

made available through the network, so that collectively, they provide the basic

secure core for the DCE environment.

1.2.10 Scalability

Applications that require scalability must have a network capable to cater for their

future requirements, or be able to be upgraded for future requirements. If an

application is modular in design, the network must also be modular to enable it to

scale linearly with the application’s requirements.

1.2.11 Security

The security of applications is catered for by the underlying protocols or by the

application itself. If an application uses UDP for its transport layer, it cannot rely

on SSL for security, hence it must use its own encryption and provide its own

security needs.

Some applications that need to be run on the network do not have built-in security

features, or have not implemented standard security concepts such as SSL. An

application based on the TELNET protocol, for example, will invariably be

unsecure. If the network security requirements are such that a TELNET

application sending out unencrypted passwords is unacceptable, then either the

TELNET port must be closed on the firewall or the application must be rewritten.

Is it really worth rewriting your TELNET program?

1.3 Platform Considerations

An important step toward building an application is to find out the capabilities of

the end user’s workstation - the platform for the application. Some of the basic

questions that have to be answered include:

• Whether the workstation supports graphics or only text

• Whether the workstation meets the basic performance requirement in terms of

CPU speed, memory size, disk space and so on

• Whether the workstation has the connectivity options required

Of these questions, features and performance criteria are easy to understand and

information is readily obtainable. The connectivity option is a difficult one to

handle because it can involve many fact findings, some of which may not be

easily available. Many times, these tasks are learned through painful experience.

Take for example, the following questions that may need to be answered if we

want to develop an application that runs on TCP/IP:

• Does the workstation support a particular network interface card?

Introduction 15

• Does the network interface card support certain cabling options?

• Does the network interface card come with readily available drivers?

• Does the workstation’s operating system support the TCP/IP protocol?

• Does the workstation’s TCP/IP stack support subnetting?

• Does the operating system support the required APIs?

• Does the operating system support multiple default routes?

• Does the operating system support multiple DNS definitions?

• Does the operating system support multicasting?

• Does the operating system support advanced features such as Resource

Reservation Protocol (RSVP)?

Depending on the type of application, the above questions may not be relevant,

but they are definitely not exhaustive. You may say the above questions are trivial

and unimportant, but the impact could be far more reaching than just merely the

availability of functions. Here’s why:

• Does the workstation support a particular network interface card?

You may want to develop a multimedia application and make use of ATM’s

superb delivery capability. But the truth is, not all workstations support ATM

cards.

• Does the network interface card support certain cabling options?

Even if the network interface card is available, it may not have the required

cabling option such as a UTP port or multimode fiber SC connection port. You

may need a UTP port because UTP cabling is cost effective. But you may also

end up requiring fiber connectivity because you are the only employee located

in the attic and the connecting device is situated down in the basement.

• Does the network interface card come with readily available drivers?

Right, so we have the network interface card and it does support fiber SC

connections, but what about the bug that causes the workstation to hang? The

necessary patch may be six months away.

• Does the workstation’s operating system support the TCP/IP protocol?

It may seem an awkward question but there may be a different flavor of TCP/IP

implementation. A good example is the Classical IP (CIP) and LAN emulation

(LANE) implementation in an ATM network. Some operating systems may

support only CIP, while some may only support LANE.

• Does the workstation’s TCP/IP stack support subnetting?

In the world of IP address shortages, there may be a need to subdivide a

precious network subnet address further. And not all systems support

subnetting, especially the old systems.

• Does the operating system support the required APIs?

One popular way of developing a TCP/IP application is to use sockets

programming. But the TCP/IP stack on the user’s workstation may not fully

support it. This gets worse if there are many workstation types in the network,

each running different operating systems.

• Does the operating system support multiple default routes?

16 IP Network Design Guide

Unlike other systems, Windows 95 does not support multiple default routes. If

you are trying to develop a mission-critical application, this may be a serious

single point of failure. Some other workaround has to be implemented just to

alleviate this shortcoming.

• Does the operating system support multiple DNS definitions?

This one has the same impact as the point above. With clients capable of

having only one DNS definition, a high availability option may have to be built

into the DNS server. On the other hand, with clients capable of supporting

multiple DNS, the applications must be supported with APIs that can provide

such facilities.

• Does the operating system support multicasting?

There may be a need to deliver video to the users, and one of the ways is

through multicasting. Multicasting is a good choice as it conserves the network

bandwidth. But not all clients support multicasting.

• Does the operating system support advanced features such as RSVP?

Although standards like RSVP had been rectified for quite some time, many

operating systems do not support such features. For example, Windows 95

does not support RSVP.

1.4 Infrastructure Considerations

The applications need a transport mechanism to share information, to transmit

data or to send requests for some services. The transport mechanism is provided

by the underlying layer called the network infrastructure.

Building a network infrastructure can be a daunting task for the inexperienced.

Imagine building a network for a company with 100,000 employees and 90

different locations around the world. How do you go about building it? And where

do you begin?

As in the application consideration, building a network infrastructure involves

many decision making processes:

• What are the technologies out there?

• Which technology should I use for the LAN?

• Which technology should I use for the WAN?

• How do I put everything together?

• What is this thing called switching?

• How should the network design look?

• What equipment is required?

• How should it grow?

• How much does it cost?

• Can I manage it?

• Can I meet the deployment schedule?

• Is there a strategy to adopt?

Introduction 17

The Internet as we have it today grew out of circumstances. In the beginning, it

was not designed to be what it is today. In fact, there was not any planning or

design work done for it. It is merely a network of different networks put together,

and we have already seen its problems and limitations:

• It has almost run out of IP addresses

• It has performance problems

• It cannot readily support new generation applications

• It does not have redundancy

• It has security problems

• It has erratic response time

Work has begun on building the so-called New Generation Internet (NGI) and it is

supposed to be able to address most, if not all, of the problems that we are

experiencing with the Internet today. The NGI will be entirely different from what

we have today, as it is the first time that a systematic approach has been used to

design and build an Internet.

1.5 The Perfect Network

So, you may ask: Is there such a thing as a perfect network?

If a network manager is assigned to build a network for a company, he/she would

have to know how to avoid all the problems we have mentioned above. He or she

would use the best equipment and would have chosen the best networking

technologies available, but may still not have built a perfect network. Why?

The truth is, there is no such thing as a perfect network. A network design that is

based on today’s requirements may not address those of the future. Business

environments change, and this has a spiraling effect on the infrastructure.

Expectations of employees change, the users’ requirements change, and new

needs have to be addressed by the applications, and these in turn affect how all

the various systems tie up together, which means there is a change in the

network infrastructure involved. At best, what the network could do is to scale and

adapt to changes. Until the day it has reached its technical limitation, these are

the two criteria for a network to stay relevant; after that, a forklift operation may be

required.

Networks evolve over time. They have to do so to add value.

The above sections have highlighted that much work has to be done before an

application gets to be deployed to support a business’ needs. From the network

infrastructure to the various system designs, server deployments, security

considerations and types of client workstations, they all have to be well

coordinated. A minor error could mean back to the drawing board for the system

designer, and lots of money for the board of directors.

18 IP Network Design Guide

© Copyright IBM Corp. 1995 1999 19

Chapter 2. The Network Infrastructure

The network infrastructure is an important component in IP network design. It is

important simply because, at the end of the day, it is those wires that carry the

information. A well thought-out network infrastructure not only provides reliable

and fast delivery of that information, but it is also able to adapt to changes, and

grow as your business expands.

Building a network infrastructure is a complex task, requiring work such as

information gathering, planning, designing, and modeling. Though it deals mainly

with bits and bytes, it is more of an art than a science, because there are no fast

rules to building one.

When you build a network infrastructure, you look more at the lower three layers

of the OSI model, although many other factors need to be considered. There are

many technologies available that you can use to build a network, and the

challenge that a network manager faces, is to choose the correct one and the tool

that comes with it. It is important to know the implications of selecting a particular

technology, because the network manager ultimately decides what equipment is

required. When selecting a piece of networking equipment, it is important to know

at which layer of the OSI model the device functions. The functionality of the

equipment is important because it has to conform to certain standards, it has to

live up to the expectation of the application, and it has to perform tasks that are

required by the blue print - the network architecture.

The implementation of IP over different protocols depends on the mechanism

used for mapping the IP addresses to the hardware addresses, or MAC address,

at the data link layer of the OSI model. Some important aspects to consider when

using IP over any data link protocol are:

• Address mapping

Different data link layer protocols have different ways of mapping the IP

address to the hardware address. In the TCP/IP protocol suite, the Address

Resolution Protocol (ARP) is used for this purpose, and it works only in a

broadcast network.

• Encapsulation and overheads

The encapsulation of the IP packets into the data link layer packet and the

overheads incurred should be evaluated. Because different data link layer

protocols transport information differently, one may be more suitable than the

other.

• Routing

Routing is the process of transporting the IP packets from network to network,

and is an important component in an IP network. Many protocols are available

to provide the intelligence in the routing of the IP protocol, some with

sophisticated capabilities. The introduction of switching and some other data

link layer protocols has introduced the possibility of building switched paths in

the network that can bypass the routing process. This saves network

resources and reduces the network delay by eliminating the slower process of

routing that relies on software rather than on hardware or microcode switching

mechanisms.

• Maximum Transmission Unit (MTU)

20 IP Network Design Guide

Another parameter that should be considered in the IP implementation over

different data link layer protocols is the maximum transmission unit (MTU)

size. MTU size refers to the size of the data frame (in bytes) that has to be

transmitted to the destination through the network. A bigger MTU size means

one can send more information within a frame, thus requiring a lower total

number of packets to transmit a piece of information.

Different data link layers have different MTU sizes for the operation of the

network. If you connect two networks with different MTU sizes, then a process

called fragmentation takes place and this has to be performed by an external

device, such as a router. Fragmentation takes a larger packet and breaks it up

into smaller ones so that it can be sent onto the network with a smaller MTU

size. Fragmentation slows down the traffic flow and should be avoided as

much as possible.

2.1 Technology

Besides having wires to connect all the devices together, you have to decide the

way these devices connect, the protocol in which the devices should talk to each

other. Various technologies are available, each different from one another in

standards and implementation.

In this section, a few popular technologies are covered with each of their

characteristics highlighted. These technologies cover the LAN, WAN as well as

the remote access area. For a detailed description of each technology, please

refer to

Local Area Network Concepts and Products: LAN Architecture,

SG24-4753.

2.1.1 The Basics

It is important to understand the fundamentals of how data is transmitted in an IP

network, so that the difference in how the various technologies work can be better

understood.

Each workstation connects to the network through a network interface card (NIC)

that has a unique hardware address. At the physical layer, these workstations

communicate with each other through the hardware addresses. IP, being a higher

level protocol in the OSI model, communicates through a logical address, which

in this case, is the IP address. When one workstation with an IP address of

10.1.1.1 wishes to communicate with another with the address 10.1.1.2, the NIC

does not understand these logical addresses. Some mechanism has to be

implemented to translate the destination address 10.1.1.2 to a hardware address

that the NIC can understand.

2.1.1.1 Broadcast versus Non-Broadcast Network

Generally, all networks can be grouped into two categories: broadcast and

non-broadcast. The mechanism for mapping the logical address to the hardware

address is different for these two groups of networks. The best way of describing

a broadcast network is to imagine a teacher teaching a class. The teacher talks

and every student listens. An example of a non-broadcast network would be a

mail correspondence - at any time, only the sender and receiver of the mail know

what the conversation is about, the rest of the people don’t. Examples of

broadcast networks are Ethernet, token-ring and FDDI, while examples of

non-broadcast networks are frame relay and ATM.

The Network Infrastructure 21

It is important to differentiate the behaviors of both broadcast and non-broadcast

networks, so that the usage and limitation can both be taken into consideration in

the design of an IP network.

2.1.1.2 Address Resolution Protocol (ARP)

In a broadcast network, the Address Resolution Protocol (ARP) is used to

translate the IP address to the hardware address of the destination host. Every

workstation that runs the TCP/IP protocol keeps a table, called an ARP cache,

containing the mapping of the IP address to the hardware address of the hosts

with which it is communicating. When a destination entry is not found in the ARP

cache, a broadcast, called ARP broadcast, is sent out to the network. All

workstations that are located within the same network will receive this request

and go on to check the IP address entry in the request. If one of the workstations

recognizes its own IP address in this request, it will proceed to respond with an

ARP reply, indicating its hardware address. The originating workstation then

stores this information and commences to send data through the newly learned

hardware address.

ARP provides a simple and effective mechanism for mapping an IP address to a

hardware address. However, in a large network, especially in a bridged

environment, a phenomenon known as a broadcast storm can occur if

workstations misbehave, assuming hundreds of workstations are connected to a

LAN, and ARP is used to resolve the address mapping issue. If the workstation’s

ARP cache is too small, it means the workstation has to send more broadcasts to

find out the hardware address of the destination. Having hundreds of

workstations continuously sending out ARP broadcasts would soon render the

LAN useless because nobody can send any data.

For a detailed description of ARP, please refer to

TCP/IP Tutorial and Technical

Overview,

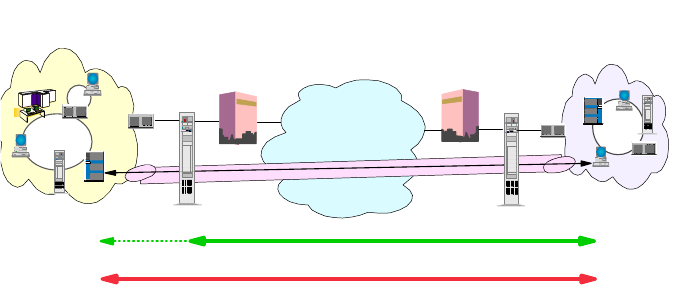

GG24-3376.