Learning Tensor Flow. A Guide To Building Deep Systems, Tom Hope, Yehezkel S. Resheff,

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 230 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents

- Preface

- Introduction

- Up & Running with TensorFlow

- TensorFlow Basics

- Convolutional Neural Networks

- Text & Sequences & Visualization

- Word Vectors, Advanced RNN & embedding Visualization

- TensorFlow Abstractions & Simplification

- Queues Threads & Reading Data

- Distributed TensorFlow

- Exporting & Serving Models

- Model Construction & TensorFlow Serving

- Index

Tom Hope, Yehezkel S. Reshe, and Itay Lieder

Learning TensorFlow

A Guide to Building Deep Learning Systems

Boston Farnham Sebastopol Tokyo

Beijing Boston Farnham Sebastopol Tokyo

Beijing

978-1-491-97851-1

[LSI]

Learning TensorFlow

August 2017: First Edition

Revision History for the First Edition

2017-08-04: First Release

2017-09-15: Second Release

by Tom Hope, Yehezkel S. Resheff, and Itay Lieder

Copyright © 2017 Tom Hope, Itay Lieder, and Yehezkel S. Resheff. All rights reserved.

Printed in the United States of America

Preface. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . vii

1. Introduction. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1

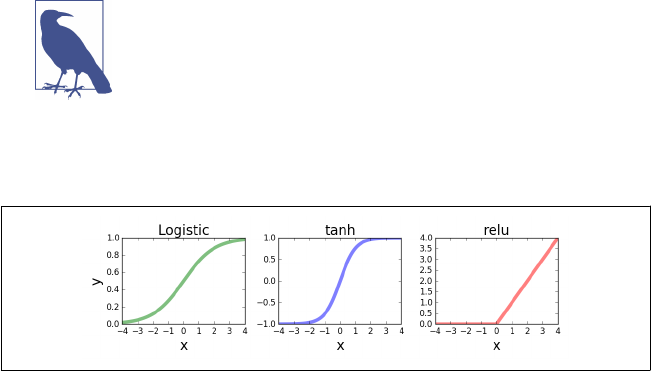

Going Deep 1

Using TensorFlow for AI Systems 2

TensorFlow: What’s in a Name? 5

A High-Level Overview 6

Summary 8

2. Go with the Flow: Up and Running with TensorFlow. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

Installing TensorFlow 9

Hello World 11

MNIST 13

Softmax Regression 14

Summary 21

3. Understanding TensorFlow Basics. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

Computation Graphs 23

What Is a Computation Graph? 23

The Benefits of Graph Computations 24

Graphs, Sessions, and Fetches 24

Creating a Graph 25

Creating a Session and Running It 26

Constructing and Managing Our Graph 27

Fetches 29

Flowing Tensors 30

Nodes Are Operations, Edges Are Tensor Objects 30

Data Types 32

Contents

Tensor Arrays and Shapes 33

Names 37

Variables, Placeholders, and Simple Optimization 38

Variables 38

Placeholders 39

Optimization 40

Summary 49

4. Convolutional Neural Networks. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51

Introduction to CNNs 51

MNIST: Take II 53

Convolution 54

Pooling 56

Dropout 57

The Model 57

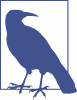

CIFAR10 61

Loading the CIFAR10 Dataset 62

Simple CIFAR10 Models 64

Summary 68

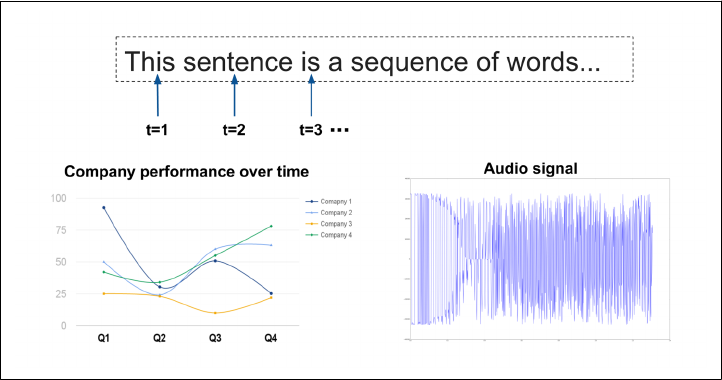

5. Text I: Working with Text and Sequences, and TensorBoard Visualization. . . . . . . . . . . 69

The Importance of Sequence Data 69

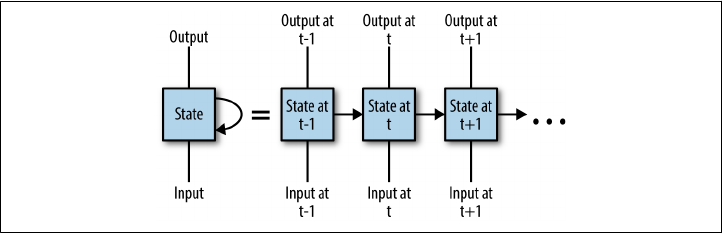

Introduction to Recurrent Neural Networks 70

Vanilla RNN Implementation 72

TensorFlow Built-in RNN Functions 82

RNN for Text Sequences 84

Text Sequences 84

Supervised Word Embeddings 88

LSTM and Using Sequence Length 89

Training Embeddings and the LSTM Classifier 91

Summary 93

6. Text II: Word Vectors, Advanced RNN, and Embedding Visualization. . . . . . . . . . . . . . . 95

Introduction to Word Embeddings 95

Word2vec 97

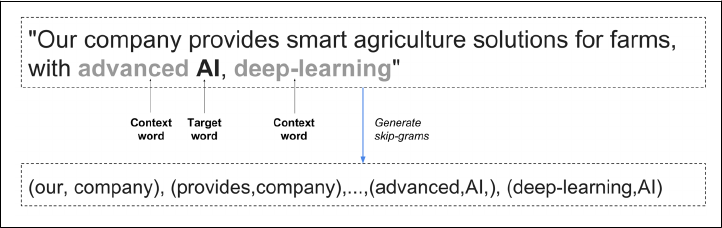

Skip-Grams 98

Embeddings in TensorFlow 100

The Noise-Contrastive Estimation (NCE) Loss Function 101

Learning Rate Decay 101

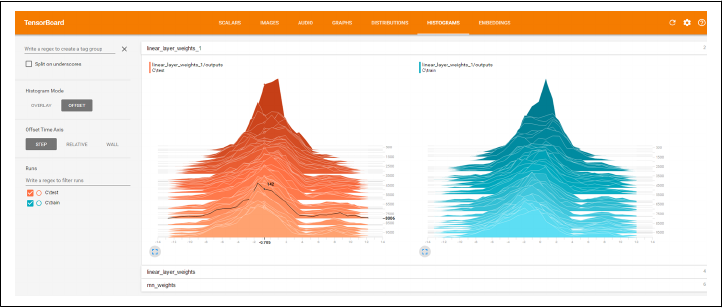

Training and Visualizing with TensorBoard 102

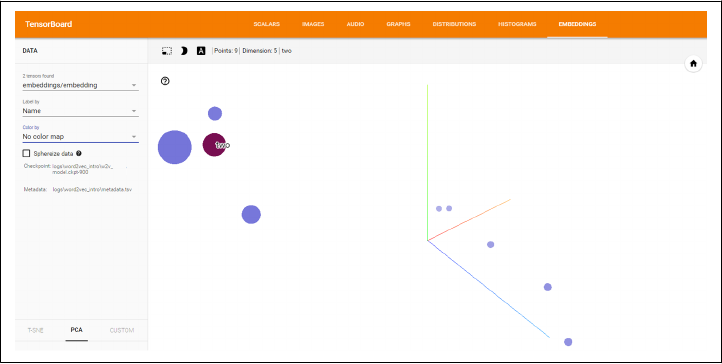

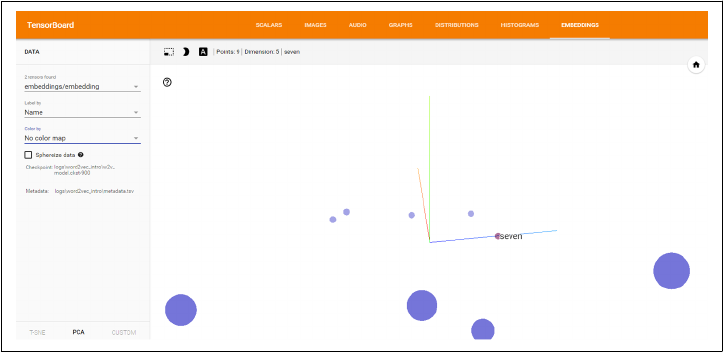

Checking Out Our Embeddings 103

Pretrained Embeddings, Advanced RNN 105

Pretrained Word Embeddings 106

Bidirectional RNN and GRU Cells 110

Summary 112

7. TensorFlow Abstractions and Simplications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 113

Chapter Overview 113

High-Level Survey 115

contrib.learn 117

Linear Regression 118

DNN Classifier 120

FeatureColumn 123

Homemade CNN with contrib.learn 128

TFLearn 131

Installation 131

CNN 131

RNN 134

Keras 136

Pretrained models with TF-Slim 143

Summary 151

8. Queues, Threads, and Reading Data. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 153

The Input Pipeline 153

TFRecords 154

Writing with TFRecordWriter 155

Queues 157

Enqueuing and Dequeuing 157

Multithreading 159

Coordinator and QueueRunner 160

A Full Multithreaded Input Pipeline 162

tf.train.string_input_producer() and tf.TFRecordReader() 164

tf.train.shuffle_batch() 164

tf.train.start_queue_runners() and Wrapping Up 165

Summary 166

9. Distributed TensorFlow. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 167

Distributed Computing 167

Where Does the Parallelization Take Place? 168

What Is the Goal of Parallelization? 168

TensorFlow Elements 169

tf.app.flags 169

Clusters and Servers 170

Replicating a Computational Graph Across Devices 171

Managed Sessions 171

Device Placement 172

Distributed Example 173

Summary 179

10. Exporting and Serving Models with TensorFlow. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 181

Saving and Exporting Our Model 181

Assigning Loaded Weights 182

The Saver Class 185

Introduction to TensorFlow Serving 191

Overview 192

Installation 193

Building and Exporting 194

Summary 201

A. Tips on Model Construction and Using TensorFlow Serving. . . . . . . . . . . . . . . . . . . . . . . 203

Index. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 221

Preface

Deep learning has emerged in the last few years as a premier technology for building

intelligent systems that learn from data. Deep neural networks, originally roughly

inspired by how the human brain learns, are trained with large amounts of data to

solve complex tasks with unprecedented accuracy. With open source frameworks

making this technology widely available, it is becoming a must-know for anybody

involved with big data and machine learning.

TensorFlow is currently the leading open source software for deep learning, used by a

rapidly growing number of practitioners working on computer vision, natural lan‐

guage processing (NLP), speech recognition, and general predictive analytics.

This book is an end-to-end guide to TensorFlow designed for data scientists, engi‐

neers, students, and researchers. The book adopts a hands-on approach suitable for a

broad technical audience, allowing beginners a gentle start while diving deep into

advanced topics and showing how to build production-ready systems.

In this book you will learn how to:

1. Get up and running with TensorFlow, rapidly and painlessly.

2. Use TensorFlow to build models from the ground up.

3. Train and understand popular deep learning models for computer vision and

NLP.

4. Use extensive abstraction libraries to make development easier and faster.

5. Scale up TensorFlow with queuing and multithreading, training on clusters, and

serving output in production.

6. And much more!

This book is written by data scientists with extensive R&D experience in both indus‐

try and academic research. The authors take a hands-on approach, combining practi‐

cal and intuitive examples, illustrations, and insights suitable for practitioners seeking

to build production-ready systems, as well as readers looking to learn to understand

and build flexible and powerful models.

Prerequisites

This book assumes some basic Python programming know-how, including basic

familiarity with the scientific library NumPy.

Machine learning concepts are touched upon and intuitively explained throughout

the book. For readers who want to gain a deeper understanding, a reasonable level of

knowledge in machine learning, linear algebra, calculus, probability, and statistics is

recommended.

Conventions Used in This Book

The following typographical conventions are used in this book:

Italic

Indicates new terms, URLs, email addresses, filenames, and file extensions.

Constant width

Used for program listings, as well as within paragraphs to refer to program ele‐

ments such as variable or function names, databases, data types, environment

variables, statements, and keywords.

Constant width bold

Shows commands or other text that should be typed literally by the user.

Constant width italic

Shows text that should be replaced with user-supplied values or by values deter‐

mined by context.

Using Code Examples

Supplemental material (code examples, exercises, etc.) is available for download at

https://github.com/Hezi-Reshe/Oreilly-Learning-TensorFlow.

This book is here to help you get your job done. In general, if example code is offered

with this book, you may use it in your programs and documentation. You do not

need to contact us for permission unless you’re reproducing a significant portion of

the code. For example, writing a program that uses several chunks of code from this

book does not require permission. Selling or distributing a CD-ROM of examples

from O’Reilly books does require permission. Answering a question by citing this

book and quoting example code does not require permission. Incorporating a signifi‐

cant amount of example code from this book into your product’s documentation does

require permission.

CHAPTER 1

Introduction

This chapter provides a high-level overview of TensorFlow and its primary use:

implementing and deploying deep learning systems. We begin with a very brief intro‐

ductory look at deep learning. We then present TensorFlow, showcasing some of its

exciting uses for building machine intelligence, and then lay out its key features and

properties.

Going Deep

From large corporations to budding startups, engineers and data scientists are col‐

lecting huge amounts of data and using machine learning algorithms to answer com‐

plex questions and build intelligent systems. Wherever one looks in this landscape,

the class of algorithms associated with deep learning have recently seen great success,

often leaving traditional methods in the dust. Deep learning is used today to under‐

stand the content of images, natural language, and speech, in systems ranging from

mobile apps to autonomous vehicles. Developments in this field are taking place at

breakneck speed, with deep learning being extended to other domains and types of

data, like complex chemical and genetic structures for drug discovery and high-

dimensional medical records in public healthcare.

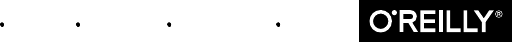

Deep learning methods—which also go by the name of deep neural networks—were

originally roughly inspired by the human brain’s vast network of interconnected neu‐

rons. In deep learning, we feed millions of data instances into a network of neurons,

teaching them to recognize patterns from raw inputs. The deep neural networks take

raw inputs (such as pixel values in an image) and transform them into useful repre‐

sentations, extracting higher-level features (such as shapes and edges in images) that

capture complex concepts by combining smaller and smaller pieces of information to

solve challenging tasks such as image classification (Figure 1-1). The networks auto‐

matically learn to build abstract representations by adapting and correcting them‐

1

selves, fitting patterns observed in the data. The ability to automatically construct

data representations is a key advantage of deep neural nets over conventional

machine learning, which typically requires domain expertise and manual feature

engineering before any “learning” can occur.

Figure 1-1. An illustration of image classication with deep neural networks. e net‐

work takes raw inputs (pixel values in an image) and learns to transform them into use‐

ful representations, in order to obtain an accurate image classication.

This book is about Google’s framework for deep learning, TensorFlow. Deep learning

algorithms have been used for several years across many products and areas at Goo‐

gle, such as search, translation, advertising, computer vision, and speech recognition.

TensorFlow is, in fact, a second-generation system for implementing and deploying

deep neural networks at Google, succeeding the DistBelief project that started in

2011.

TensorFlow was released to the public as an open source framework with an Apache

2.0 license in November 2015 and has already taken the industry by storm, with

adoption going far beyond internal Google projects. Its scalability and flexibility,

combined with the formidable force of Google engineers who continue to maintain

and develop it, have made TensorFlow the leading system for doing deep learning.

Using TensorFlow for AI Systems

Before going into more depth about what TensorFlow is and its key features, we will

briefly give some exciting examples of how TensorFlow is used in some cutting-edge

real-world applications, at Google and beyond.

2 | Chapter 1: Introduction

Pre-trained models: state-of-the-art computer vision for all

One primary area where deep learning is truly shining is computer vision. A funda‐

mental task in computer vision is image classification—building algorithms and sys‐

tems that receive images as input, and return a set of categories that best describe

them. Researchers, data scientists, and engineers have designed advanced deep neural

networks that obtain highly accurate results in understanding visual content. These

deep networks are typically trained on large amounts of image data, taking much

time, resources, and effort. However, in a growing trend, researchers are publicly

releasing pre-trained models—deep neural nets that are already trained and that

users can download and apply to their data (Figure 1-2).

Figure 1-2. Advanced computer vision with pre-trained TensorFlow models.

TensorFlow comes with useful utilities allowing users to obtain and apply cutting-

edge pretrained models. We will see several practical examples and dive into the

details throughout this book.

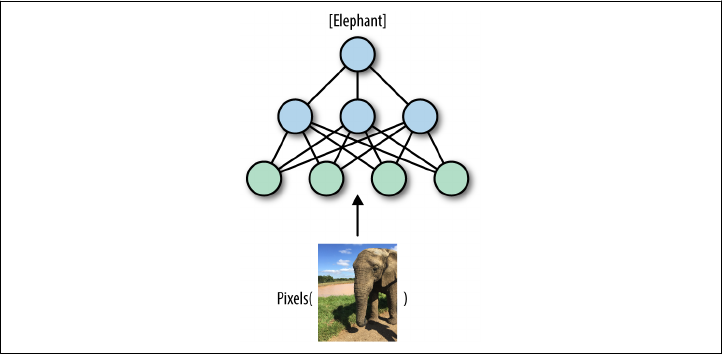

Generating rich natural language descriptions for images

One exciting area of deep learning research for building machine intelligence systems

is focused on generating natural language descriptions for visual content (Figure 1-3).

A key task in this area is image captioning—teaching the model to output succinct

and accurate captions for images. Here too, advanced pre-trained TensorFlow models

that combine natural language understanding with computer vision are available.

Going Deep | 3

Figure 1-3. Going from images to text with image captioning (illustrative example).

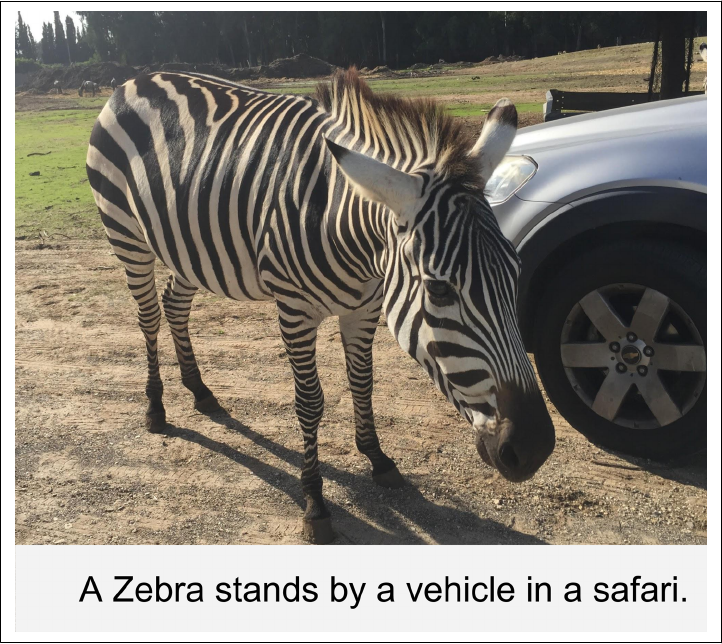

Text summarization

Natural language understanding (NLU) is a key capability for building AI systems.

Tremendous amounts of text are generated every day: web content, social media,

news, emails, internal corporate correspondences, and many more. One of the most

sought-after abilities is to summarize text, taking long documents and generating

succinct and coherent sentences that extract the key information from the original

texts (Figure 1-4). As we will see later in this book, TensorFlow comes with powerful

features for training deep NLU networks, which can also be used for automatic text

summarization.

4 | Chapter 1: Introduction

Figure 1-4. An illustration of smart text summarization.

TensorFlow: What’s in a Name?

Deep neural networks, as the term and the illustrations we’ve shown imply, are all

about networks of neurons, with each neuron learning to do its own operation as part

of a larger picture. Data such as images enters this network as input, and flows

through the network as it adapts itself at training time or predicts outputs in a

deployed system.

Tensors are the standard way of representing data in deep learning. Simply put, ten‐

sors are just multidimensional arrays, an extension of two-dimensional tables (matri‐

ces) to data with higher dimensionality. Just as a black-and-white (grayscale) images

are represented as “tables” of pixel values, RGB images are represented as tensors

(three-dimensional arrays), with each pixel having three values corresponding to red,

green, and blue components.

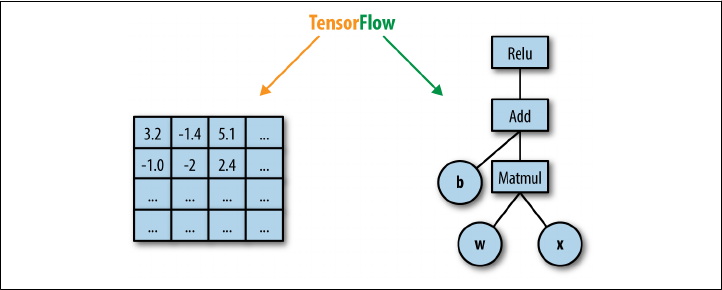

In TensorFlow, computation is approached as a dataow graph (Figure 1-5). Broadly

speaking, in this graph, nodes represent operations (such as addition or multiplica‐

tion), and edges represent data (tensors) flowing around the system. In the next chap‐

ters, we will dive deeper into these concepts and learn to understand them with many

examples.

TensorFlow: What’s in a Name? | 5

Figure 1-5. A dataow computation graph. Data in the form of tensors ows through a

graph of computational operations that make up our deep neural networks.

A High-Level Overview

TensorFlow, in the most general terms, is a software framework for numerical com‐

putations based on dataflow graphs. It is designed primarily, however, as an interface

for expressing and implementing machine learning algorithms, chief among them

deep neural networks.

TensorFlow was designed with portability in mind, enabling these computation

graphs to be executed across a wide variety of environments and hardware platforms.

With essentially identical code, the same TensorFlow neural net could, for instance,

be trained in the cloud, distributed over a cluster of many machines or on a single

laptop. It can be deployed for serving predictions on a dedicated server or on mobile

device platforms such as Android or iOS, or Raspberry Pi single-board computers.

TensorFlow is also compatible, of course, with Linux, macOS, and Windows operat‐

ing systems.

The core of TensorFlow is in C++, and it has two primary high-level frontend lan‐

guages and interfaces for expressing and executing the computation graphs. The most

developed frontend is in Python, used by most researchers and data scientists. The

C++ frontend provides quite a low-level API, useful for efficient execution in embed‐

ded systems and other scenarios.

Aside from its portability, another key aspect of TensorFlow is its flexibility, allowing

researchers and data scientists to express models with relative ease. It is sometimes

revealing to think of modern deep learning research and practice as playing with

“LEGO-like” bricks, replacing blocks of the network with others and seeing what hap‐

pens, and at times designing new blocks. As we shall see throughout this book, Ten‐

sorFlow provides helpful tools to use these modular blocks, combined with a flexible

API that enables the writing of new ones. In deep learning, networks are trained with

6 | Chapter 1: Introduction

a feedback process called backpropagation based on gradient descent optimization.

TensorFlow flexibly supports many optimization algorithms, all with automatic dif‐

ferentiation—the user does not need to specify any gradients in advance, since Ten‐

sorFlow derives them automatically based on the computation graph and loss

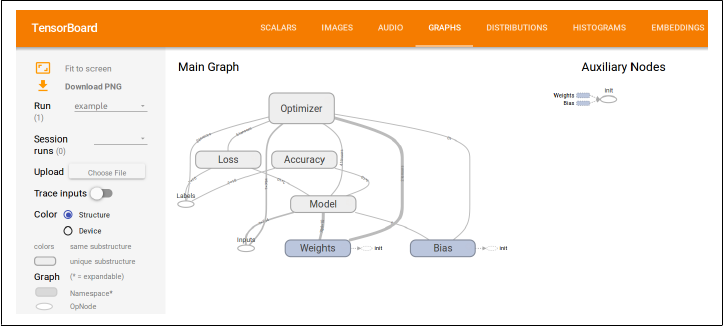

function provided by the user. To monitor, debug, and visualize the training process,

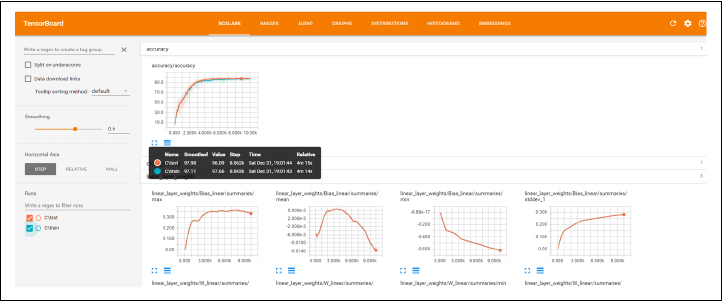

and to streamline experiments, TensorFlow comes with TensorBoard (Figure 1-6), a

simple visualization tool that runs in the browser, which we will use throughout this

book.

Figure 1-6. TensorFlow’s visualization tool, TensorBoard, for monitoring, debugging, and

analyzing the training process and experiments.

Key enablers of TensorFlow’s flexibility for data scientists and researchers are high-

level abstraction libraries. In state-of-the-art deep neural nets for computer vision or

NLU, writing TensorFlow code can take a toll—it can become a complex, lengthy, and

cumbersome endeavor. Abstraction libraries such as Keras and TF-Slim offer simpli‐

fied high-level access to the “LEGO bricks” in the lower-level library, helping to

streamline the construction of the dataflow graphs, training them, and running infer‐

ence. Another key enabler for data scientists and engineers is the pretrained models

that come with TF-Slim and TensorFlow. These models were trained on massive

amounts of data with great computational resources, which are often hard to come by

and in any case require much effort to acquire and set up. Using Keras or TF-Slim, for

example, with just a few lines of code it is possible to use these advanced models for

inference on incoming data, and also to fine-tune the models to adapt to new data.

The flexibility and portability of TensorFlow help make the flow from research to

production smooth, cutting the time and effort it takes for data scientists to push

their models to deployment in products and for engineers to translate algorithmic

ideas into robust code.

A High-Level Overview | 7

TensorFlow abstractions

TensorFlow comes with abstraction libraries such as Keras and TF-

Slim, offering simplified high-level access to TensorFlow. These

abstractions, which we will see later in this book, help streamline

the construction of the dataflow graphs and enable us to train them

and run inference with many fewer lines of code.

But beyond flexibility and portability, TensorFlow has a suite of properties and tools

that make it attractive for engineers who build real-world AI systems. It has natural

support for distributed training—indeed, it is used at Google and other large industry

players to train massive networks on huge amounts of data, over clusters of many

machines. In local implementations, training on multiple hardware devices requires

few changes to code used for single devices. Code also remains relatively unchanged

when going from local to distributed, which makes using TensorFlow in the cloud, on

Amazon Web Services (AWS) or Google Cloud, particularly attractive. Additionally,

as we will see further along in this book, TensorFlow comes with many more features

aimed at boosting scalability. These include support for asynchronous computation

with threading and queues, efficient I/O and data formats, and much more.

Deep learning continues to rapidly evolve, and so does TensorFlow, with frequent

new and exciting additions, bringing better usability, performance, and value.

Summary

With the set of tools and features described in this chapter, it becomes clear why Ten‐

sorFlow has attracted so much attention in little more than a year. This book aims at

first rapidly getting you acquainted with the basics and ready to work, and then we

will dive deeper into the world of TensorFlow with exciting and practical examples.

8 | Chapter 1: Introduction

1We refer the reader to the official TensorFlow install guide for further details, and especially the ever-changing

details of GPU installations.

CHAPTER 2

Go with the Flow: Up and Running

with TensorFlow

In this chapter we start our journey with two working TensorFlow examples. The first

(the traditional “hello world” program), while short and simple, includes many of the

important elements we discuss in depth in later chapters. With the second, a first end-

to-end machine learning model, you will embark on your journey toward state-of-

the-art machine learning with TensorFlow.

Before getting started, we briefly walk through the installation of TensorFlow. In

order to facilitate a quick and painless start, we install the CPU version only, and

defer the GPU installation to later.1 (If you don’t know what this means, that’s OK for

the time being!) If you already have TensorFlow installed, skip to the second section.

Installing TensorFlow

If you are using a clean Python installation (probably set up for the purpose of learn‐

ing TensorFlow), you can get started with the simple pip installation:

$ pip install tensorflow

This approach does, however, have the drawback that TensorFlow will override exist‐

ing packages and install specific versions to satisfy dependencies. If you are using this

Python installation for other purposes as well, this will not do. One common way

around this is to install TensorFlow in a virtual environment, managed by a utility

called virtualenv.

9

Depending on your setup, you may or may not need to install virtualenv on your

machine. To install virtualenv, type:

$ pip install virtualenv

See http://virtualenv.pypa.io for further instructions.

In order to install TensorFlow in a virtual environment, you must first create the vir‐

tual environment—in this book we choose to place these in the ~/envs folder, but feel

free to put them anywhere you prefer:

$ cd ~

$ mkdir envs

$ virtualenv ~/envs/tensorflow

This will create a virtual environment named tensorow in ~/envs (which will mani‐

fest as the folder ~/envs/tensorow). To activate the environment, use:

$ source ~/envs/tensorflow/bin/activate

The prompt should now change to indicate the activated environment:

(tensorflow)$

At this point the pip install command:

(tensorflow)$ pip install tensorflow

will install TensorFlow into the virtual environment, without impacting other pack‐

ages installed on your machine.

Finally, in order to exit the virtual environment, you type:

(tensorflow)$ deactivate

at which point you should get back the regular prompt:

$

TensorFlow for Windows Users

Up until recently TensorFlow had been notoriously difficult to use with Windows

machines. As of TensorFlow 0.12, however, Windows integration is here! It is as sim‐

ple as:

pip install tensorflow

for the CPU version, or:

pip install tensorflow-gpu

for the GPU-enabled version (assuming you already have CUDA 8).

10 | Chapter 2: Go with the Flow: Up and Running with TensorFlow

Adding an alias to ~/.bashrc

The process described for entering and exiting your virtual envi‐

ronment might be too cumbersome if you intend to use it often. In

this case, you can simply append the following command to your

~/.bashrc file:

alias tensorflow="source ~/envs/tensorflow/bin/activate"

and use the command tensorflow to activate the virtual environ‐

ment. To quit the environment, you will still use deactivate.

Now that we have a basic installation of TensorFlow, we can proceed to our first

working examples. We will follow the well-established tradition and start with a

“hello world” program.

Hello World

Our first example is a simple program that combines the words “Hello” and “ World!”

and displays the output—the phrase “Hello World!” While simple and straightfor‐

ward, this example introduces many of the core elements of TensorFlow and the ways

in which it is different from a regular Python program.

We suggest you run this example on your machine, play around with it a bit, and see

what works. Next, we will go over the lines of code and discuss each element sepa‐

rately.

First, we run a simple install and version check (if you used the virtualenv installation

option, make sure to activate it before running TensorFlow code):

import tensorflow as tf

print(tf.__version__)

If correct, the output will be the version of TensorFlow you have installed on your

system. Version mismatches are the most probable cause of issues down the line.

Example 2-1 shows the complete “hello world” example.

Example 2-1. “Hello world” with TensorFlow

import tensorflow as tf

h = tf.constant("Hello")

w = tf.constant(" World!")

hw = h + w

with tf.Session() as sess:

ans = sess.run(hw)

print (ans)

Hello World | 11

We assume you are familiar with Python and imports, in which case the first line:

import tensorflow as tf

requires no explanation.

IDE conguration

If you are running TensorFlow code from an IDE, then make sure

to redirect to the virtualenv where the package is installed. Other‐

wise, you will get the following import error:

ImportError: No module named tensorflow

In the PyCharm IDE this is done by selecting Run→Edit Configu‐

rations, then changing Python Interpreter to point to ~/envs/

tensorow/bin/python, assuming you used ~/envs/tensorow as the

virtualenv directory.

Next, we define the constants "Hello" and " World!", and combine them:

import tensorflow as tf

h = tf.constant("Hello")

w = tf.constant(" World!")

hw = h + w

At this point, you might wonder how (if at all) this is different from the simple

Python code for doing this:

ph = "Hello"

pw = " World!"

phw = h + w

The key point here is what the variable hw contains in each case. We can check this

using the print command. In the pure Python case we get this:

>print phw

Hello World!

In the TensorFlow case, however, the output is completely different:

>print hw

Tensor("add:0", shape=(), dtype=string)

Probably not what you expected!

In the next chapter we explain the computation graph model of TensorFlow in detail,

at which point this output will become completely clear. The key idea behind compu‐

tation graphs in TensorFlow is that we first define what computations should take

place, and then trigger the computation in an external mechanism. Thus, the Tensor‐

Flow line of code:

12 | Chapter 2: Go with the Flow: Up and Running with TensorFlow

hw = h + w

does not compute the sum of h and w, but rather adds the summation operation to a

graph of computations to be done later.

Next, the Session object acts as an interface to the external TensorFlow computation

mechanism, and allows us to run parts of the computation graph we have already

defined. The line:

ans = sess.run(hw)

actually computes hw (as the sum of h and w, the way it was defined previously), fol‐

lowing which the printing of ans displays the expected “Hello World!” message.

This completes the first TensorFlow example. Next, we dive right in with a simple

machine learning example, which already shows a great deal of the promise of the

TensorFlow framework.

MNIST

The MNIST (Mixed National Institute of Standards and Technology) handwritten

digits dataset is one of the most researched datasets in image processing and machine

learning, and has played an important role in the development of artificial neural net‐

works (now generally referred to as deep learning).

As such, it is fitting that our first machine learning example should be dedicated to

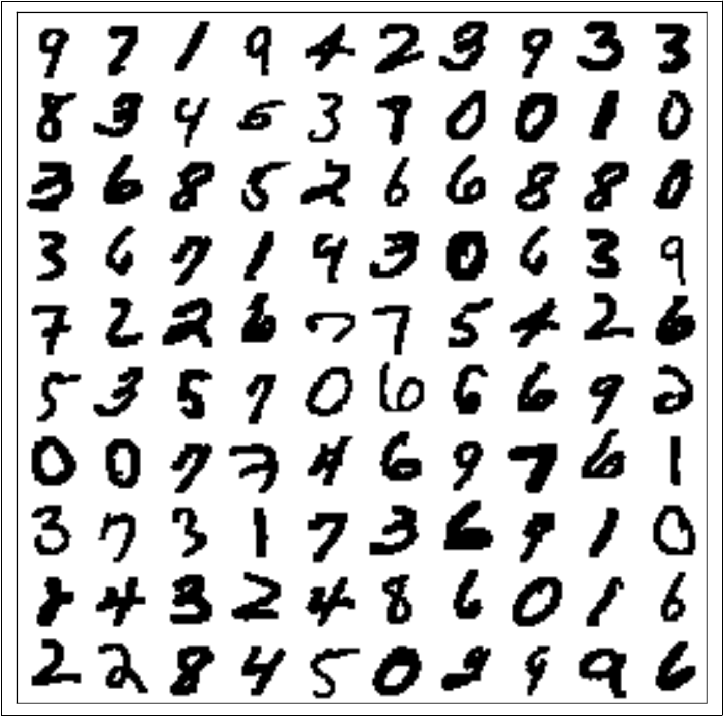

the classification of handwritten digits (Figure 2-1 shows a random sample from the

dataset). At this point, in the interest of keeping it simple, we will apply a very simple

classifier. This simple model will suffice to classify approximately 92% of the test set

correctly—the best models currently available reach over 99.75% correct classifica‐

tion, but we have a few more chapters to go until we get there! Later in the book, we

will revisit this data and use more sophisticated methods.

MNIST | 13

Figure 2-1. 100 random MNIST images

Softmax Regression

In this example we will use a simple classifier called somax regression. We will not go

into the mathematical formulation of the model in too much detail (there are plenty

of good resources where you can find this information, and we strongly suggest that

you do so, if you have never seen this before). Rather, we will try to provide some

intuition into the way the model is able to solve the digit recognition problem.

Put simply, the softmax regression model will figure out, for each pixel in the image,

which digits tend to have high (or low) values in that location. For instance, the cen‐

ter of the image will tend to be white for zeros, but black for sixes. Thus, a black pixel

14 | Chapter 2: Go with the Flow: Up and Running with TensorFlow

2It is common to add a “bias term,” which is equivalent to stating which digits we believe an image to be before

seeing the pixel values. If you have seen this before, then try adding it to the model and check how it affects

the results.

3If you are familiar with softmax regression, you probably realize this is a simplification of the way it works,

especially when pixel values are as correlated as with digit images.

in the center of an image will be evidence against the image containing a zero, and in

favor of it containing a six.

Learning in this model consists of finding weights that tell us how to accumulate evi‐

dence for the existence of each of the digits. With softmax regression, we will not use

the spatial information in the pixel layout in the image. Later on, when we discuss

convolutional neural networks, we will see that utilizing spatial information is one of

the key elements in making great image-processing and object-recognition models.

Since we are not going to use the spatial information at this point, we will unroll our

image pixels as a single long vector denoted x (Figure 2-2). Then

xw0 = ∑xiwi

0

will be the evidence for the image containing the digit 0 (and in the same way we will

have wd weight vectors for each one of the other digits, d= 1, . . ., 9).

Figure 2-2. MNIST image pixels unrolled to vectors and stacked as columns (sorted by

digit from le to right). While the loss of spatial information doesn’t allow us to recog‐

nize the digits, the block structure evident in this gure is what allows the somax model

to classify images. Essentially, all zeros (lemost block) share a similar pixel structure, as

do all ones (second block from the le), etc.

All this means is that we sum up the pixel values, each multiplied by a weight, which

we think of as the importance of this pixel in the overall evidence for the digit zero

being in the image.2

For instance, w038 will be a large positive number if the 38th pixel having a high inten‐

sity points strongly to the digit being a zero, a strong negative number if high-

intensity values in this position occur mostly in other digits, and zero if the intensity

value of the 38th pixel tells us nothing about whether or not this digit is a zero.3

Performing this calculation at once for all digits (computing the evidence for each of

the digits appearing in the image) can be represented by a single matrix operation. If

Softmax Regression | 15

we place the weights for each of the digits in the columns of a matrix W, then the

length-10 vector with the evidence for each of the digits is

[xw0···xw9] = xW

The purpose of learning a classifier is almost always to evaluate new examples. In this

case, this means that we would like to be able to tell what digit is written in a new

image we have not seen in our training data. In order to do this, we start by summing

up the evidence for each of the 10 possible digits (i.e., computing xW). The final

assignment will be the digit that “wins” by accumulating the most evidence:

digit = argmax(xW)

We start by presenting the code for this example in its entirety (Example 2-2), then

walk through it line by line and go over the details. You may find that there are many

novel elements or that some pieces of the puzzle are missing at this stage, but our

advice is that you go with it for now. Everything will become clear in due course.

Example 2-2. Classifying MNIST handwritten digits with somax regression

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

DATA_DIR = '/tmp/data'

NUM_STEPS = 1000

MINIBATCH_SIZE = 100

data = input_data.read_data_sets(DATA_DIR, one_hot=True)

x = tf.placeholder(tf.float32, [None, 784])

W = tf.Variable(tf.zeros([784, 10]))

y_true = tf.placeholder(tf.float32, [None, 10])

y_pred = tf.matmul(x, W)

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(

logits=y_pred, labels=y_true))

gd_step = tf.train.GradientDescentOptimizer(0.5).minimize(cross_entropy)

correct_mask = tf.equal(tf.argmax(y_pred, 1), tf.argmax(y_true, 1))

accuracy = tf.reduce_mean(tf.cast(correct_mask, tf.float32))

with tf.Session() as sess:

# Train

sess.run(tf.global_variables_initializer())

16 | Chapter 2: Go with the Flow: Up and Running with TensorFlow

for _ in range(NUM_STEPS):

batch_xs, batch_ys = data.train.next_batch(MINIBATCH_SIZE)

sess.run(gd_step, feed_dict={x: batch_xs, y_true: batch_ys})

# Test

ans = sess.run(accuracy, feed_dict={x: data.test.images,

y_true: data.test.labels})

print "Accuracy: {:.4}%".format(ans*100)

If you run the code on your machine, you should get output like this:

Extracting /tmp/data/train-images-idx3-ubyte.gz

Extracting /tmp/data/train-labels-idx1-ubyte.gz

Extracting /tmp/data/t10k-images-idx3-ubyte.gz

Extracting /tmp/data/t10k-labels-idx1-ubyte.gz

Accuracy: 91.83%

That’s all it takes! If you have put similar models together before using other plat‐

forms, you might appreciate the simplicity and readability. However, these are just

side bonuses, with the efficiency and flexibility gained from the computation graph

model of TensorFlow being what we are really interested in.

The exact accuracy value you get will be just under 92%. If you run the program once

more, you will get another value. This sort of stochasticity is very common in

machine learning code, and you have probably seen similar results before. In this

case, the source is the changing order in which the handwritten digits are presented

to the model during learning. As a result, the learned parameters following training

are slightly different from run to run.

Running the same program five times might therefore produce this result:

Accuracy: 91.86%

Accuracy: 91.51%

Accuracy: 91.62%

Accuracy: 91.93%

Accuracy: 91.88%

We will now briefly go over the code for this example and see what is new from the

previous “hello world” example. We’ll break it down line by line:

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

The first new element in this example is that we use external data! Rather than down‐

loading the MNIST dataset (freely available at http://yann.lecun.com/exdb/mnist/) and

loading it into our program, we use a built-in utility for retrieving the dataset on the

fly. Such utilities exist for most popular datasets, and when dealing with small ones

(in this case only a few MB), it makes a lot of sense to do it this way. The second

Softmax Regression | 17

4Here and throughout, before running the example code, make sure DATA_DIR fits the operating system you are

using. On Windows, for instance, you would probably use something like c:\tmp\data instead.

import loads the utility we will later use both to automatically download the data for

us, and to manage and partition it as needed:

DATA_DIR = '/tmp/data'

NUM_STEPS = 1000

MINIBATCH_SIZE = 100

Here we define some constants that we use in our program—these will each be

explained in the context in which they are first used:

data = input_data.read_data_sets(DATA_DIR, one_hot=True)

The read_data_sets() method of the MNIST reading utility downloads the dataset

and saves it locally, setting the stage for further use later in the program. The first

argument, DATA_DIR, is the location we wish the data to be saved to locally. We set this

to '/tmp/data', but any other location would be just as good. The second argument

tells the utility how we want the data to be labeled; we will not go into this right now.4

Note that this is what prints the first four lines of the output, indicating the data was

obtained correctly. Now we are finally ready to set up our model:

x = tf.placeholder(tf.float32, [None, 784])

W = tf.Variable(tf.zeros([784, 10]))

In the previous example we saw the TensorFlow constant element—this is now com‐

plemented by the placeholder and Variable elements. For now, it is enough to

know that a variable is an element manipulated by the computation, while a place‐

holder has to be supplied when triggering it. The image itself (x) is a placeholder,

because it will be supplied by us when running the computation graph. The size

[None, 784] means that each image is of size 784 (28×28 pixels unrolled into a single

vector), and None is an indicator that we are not currently specifying how many of

these images we will use at once:

y_true = tf.placeholder(tf.float32, [None, 10])

y_pred = tf.matmul(x, W)

In the next chapter these concepts will be dealt with in much more depth.

A key concept in a large class of machine learning tasks is that we would like to learn

a function from data examples (in our case, digit images) to their known labels (the

identity of the digit in the image). This setting is called supervised learning. In most

supervised learning models, we attempt to learn a model such that the true labels and

the predicted labels are close in some sense. Here, y_true and y_pred are the ele‐

ments representing the true and predicted labels, respectively:

18 | Chapter 2: Go with the Flow: Up and Running with TensorFlow

5As of TensorFlow 1.0 this is also contained in tf.losses.softmax_cross_entropy.

6As of TensorFlow 1.0 this is also contained in tf.metrics.accuracy.

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(

logits=y_pred, labels=y_true))

The measure of similarity we choose for this model is what is known as cross entropy

—a natural choice when the model outputs class probabilities. This element is often

referred to as the loss function:5

gd_step = tf.train.GradientDescentOptimizer(0.5).minimize(cross_entropy)

The final piece of the model is how we are going to train it (i.e., how we are going to

minimize the loss function). A very common approach is to use gradient descent

optimization. Here, 0.5 is the learning rate, controlling how fast our gradient descent

optimizer shifts model weights to reduce overall loss.

We will discuss optimizers and how they fit into the computation graph later on in

the book.

Once we have defined our model, we want to define the evaluation procedure we will

use in order to test the accuracy of the model. In this case, we are interested in the

fraction of test examples that are correctly classified:6

correct_mask = tf.equal(tf.argmax(y_pred, 1), tf.argmax(y_true, 1))

accuracy = tf.reduce_mean(tf.cast(correct_mask, tf.float32))

As with the “hello world” example, in order to make use of the computation graph we

defined, we must create a session. The rest happens within the session:

with tf.Session() as sess:

First, we must initialize all variables:

sess.run(tf.global_variables_initializer())

This carries some specific implications in the realm of machine learning and optimi‐

zation, which we will discuss further when we use models for which initialization is

an important issue

Supervised Learning and the Train/Test Scheme

Supervised learning generally refers to the task of learning a function from data

objects to labels associated with them, based on a set of examples where the correct

labels are already known. This is usually subdivided into the case where labels are

continuous (regression) or discrete (classification).

The purpose of training supervised learning models is almost always to apply them

later to new examples with unknown labels, in order to obtain predicted labels for

Softmax Regression | 19

them. In the MNIST case discussed in this section, the purpose of training the model

would probably be to apply it on new handwritten digit images and automatically

find out what digits they represent.

As a result, we are interested in the extent to which our model will label new examples

correctly. This is reflected in the way we evaluate the accuracy of the model. We first

partition the labeled dataset into train and test partitions. During model training we

use only the train partition, and during evaluation we test the accuracy only on the

test partition. This scheme is generally known as a train/test validation.

for _ in range(NUM_STEPS):

batch_xs, batch_ys = data.train.next_batch(MINIBATCH_SIZE)

sess.run(gd_step, feed_dict={x: batch_xs, y_true: batch_ys})

The actual training of the model, in the gradient descent approach, consists of taking

many steps in “the right direction.” The number of steps we will make, NUM_STEPS,

was set to 1,000 in this case. There are more sophisticated ways of deciding when to

stop, but more about that later! In each step we ask our data manager for a bunch of

examples with their labels and present them to the learner. The MINIBATCH_SIZE con‐

stant controls the number of examples to use for each step.

Finally, we use the feed_dict argument of sess.run for the first time. Recall that we

defined placeholder elements when constructing the model. Now, each time we want

to run a computation that will include these elements, we must supply a value for

them.

ans = sess.run(accuracy, feed_dict={x: data.test.images,

y_true: data.test.labels})

In order to evaluate the model we have just finished learning, we run the accuracy

computing operation defined earlier (recall the accuracy was defined as the fraction

of images that are correctly labeled). In this procedure, we feed a separate group of

test images, which were never seen by the model during training:

print "Accuracy: {:.4}%".format(ans*100)

Lastly, we print out the results as percent values.

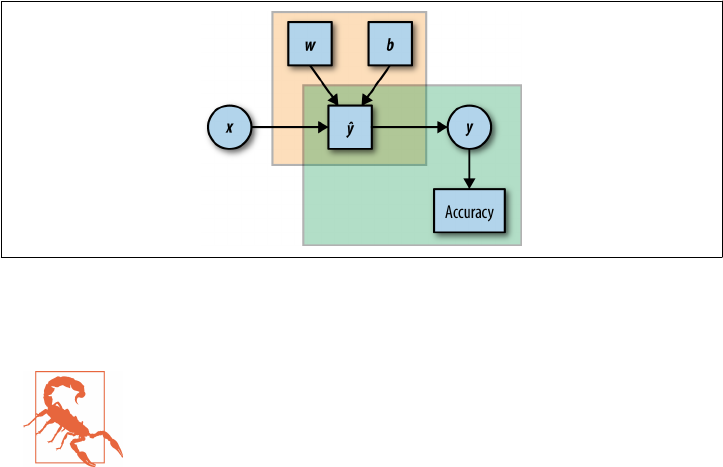

Figure 2-3 shows a graph representation of our model.

20 | Chapter 2: Go with the Flow: Up and Running with TensorFlow

Figure 2-3. A graph representation of the model. Rectangular elements are Variables,

and circles are placeholders. e top-le frame represents the label prediction part, and

the bottom-right frame the evaluation.

Model evaluation and memory errors

When using TensorFlow, like any other system, it is important to

be aware of the resources being used, and make sure not to exceed

the capacity of the system. One possible pitfall is in the evaluation

of models—testing their performance on a test set. In this example

we evaluate the accuracy of the models by feeding all the test exam‐

ples in one go:

feed_dict={x: data.test.images, y_true: data.test.labels}

ans = sess.run(accuracy, feed_dict)

If all the test examples (here, data.test.images) are not able to fit

into the memory in the system you are using, you will get a mem‐

ory error at this point. This is likely to be the case, for instance, if

you are running this example on a typical low-end GPU.

The easy way around this (getting a machine with more memory is

a temporary fix, since there will always be larger datasets) is to split

the test procedure into batches, much as we did during training.

Summary

Congratulations! By now you have installed TensorFlow and taken it for a spin with

two basic examples. You have seen some of the fundamental building blocks that will

be used throughout the book, and have hopefully begun to get a feel for TensorFlow.

Next, we take a look under the hood and explore the computation graph model used

by TensorFlow.

Summary | 21

CHAPTER 3

Understanding TensorFlow Basics

This chapter demonstrates the key concepts of how TensorFlow is built and how it

works with simple and intuitive examples. You will get acquainted with the basics of

TensorFlow as a numerical computation library using dataflow graphs. More specifi‐

cally, you will learn how to manage and create a graph, and be introduced to Tensor‐

Flow’s “building blocks,” such as constants, placeholders, and Variables.

Computation Graphs

TensorFlow allows us to implement machine learning algorithms by creating and

computing operations that interact with one another. These interactions form what

we call a “computation graph,” with which we can intuitively represent complicated

functional architectures.

What Is a Computation Graph?

We assume a lot of readers have already come across the mathematical concept of a

graph. For those to whom this concept is new, a graph refers to a set of interconnec‐

ted entities, commonly called nodes or vertices. These nodes are connected to each

other via edges. In a dataflow graph, the edges allow data to “flow” from one node to

another in a directed manner.

In TensorFlow, each of the graph’s nodes represents an operation, possibly applied to

some input, and can generate an output that is passed on to other nodes. By analogy,

we can think of the graph computation as an assembly line where each machine

(node) either gets or creates its raw material (input), processes it, and then passes the

output to other machines in an orderly fashion, producing subcomponents and even‐

tually a final product when the assembly process comes to an end.

23

Operations in the graph include all kinds of functions, from simple arithmetic ones

such as subtraction and multiplication to more complex ones, as we will see later on.

They also include more general operations like the creation of summaries, generating

constant values, and more.

The Benets of Graph Computations

TensorFlow optimizes its computations based on the graph’s connectivity. Each graph

has its own set of node dependencies. When the input of node y is affected by the

output of node x, we say that node y is dependent on node x. We call it a direct

dependency when the two are connected via an edge, and an indirect dependency

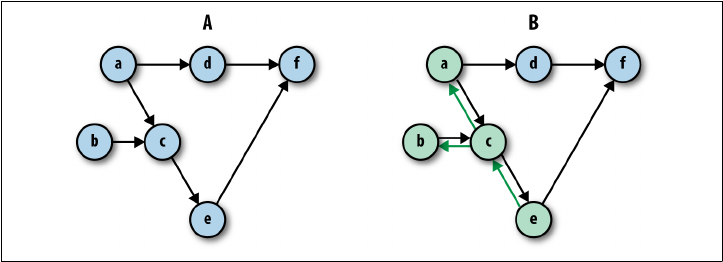

otherwise. For example, in Figure 3-1 (A), node e is directly dependent on

node c, indirectly dependent on node a, and independent of node d.

Figure 3-1. (A) Illustration of graph dependencies. (B) Computing node e results in the

minimal amount of computations according to the graph’s dependencies—in this case

computing only nodes c, b, and a.

We can always identify the full set of dependencies for each node in the graph. This is

a fundamental characteristic of the graph-based computation format. Being able to

locate dependencies between units of our model allows us to both distribute compu‐

tations across available resources and avoid performing redundant computations of

irrelevant subsets, resulting in a faster and more efficient way of computing things.

Graphs, Sessions, and Fetches

Roughly speaking, working with TensorFlow involves two main phases: (1) con‐

structing a graph and (2) executing it. Let’s jump into our first example and create

something very basic.

24 | Chapter 3: Understanding TensorFlow Basics

Creating a Graph

Right after we import TensorFlow (with import tensorflow as tf), a specific

empty default graph is formed. All the nodes we create are automatically associated

with that default graph.

Using the tf.<operator> methods, we will create six nodes assigned to arbitrarily

named variables. The contents of these variables should be regarded as the output of

the operations, and not the operations themselves. For now we refer to both the oper‐

ations and their outputs with the names of their corresponding variables.

The first three nodes are each told to output a constant value. The values 5, 2, and 3

are assigned to a, b, and c, respectively:

a = tf.constant(5)

b = tf.constant(2)

c = tf.constant(3)

Each of the next three nodes gets two existing variables as inputs, and performs sim‐

ple arithmetic operations on them:

d = tf.multiply(a,b)

e = tf.add(c,b)

f = tf.subtract(d,e)

Node d multiplies the outputs of nodes a and b. Node e adds the outputs of nodes

b and c. Node f subtracts the output of node e from that of node d.

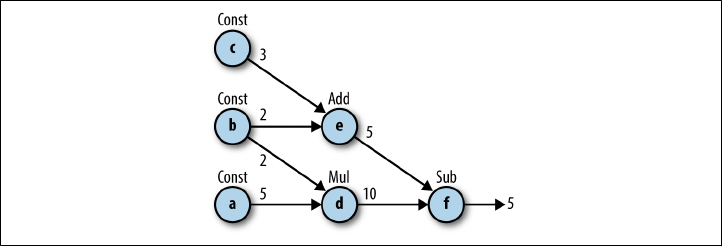

And voilà! We have our first TensorFlow graph! Figure 3-2 shows an illustration of

the graph we’ve just created.

Figure 3-2. An illustration of our rst constructed graph. Each node, denoted by a lower‐

case letter, performs the operation indicated above it: Const for creating constants and

Add, Mul, and Sub for addition, multiplication, and subtraction, respectively. e inte‐

ger next to each edge is the output of the corresponding node’s operation.

Note that for some arithmetic and logical operations it is possible to use operation

shortcuts instead of having to apply tf.<operator>. For example, in this graph we

Graphs, Sessions, and Fetches | 25

could have used */+/- instead of tf.multiply()/tf.add()/tf.subtract() (like we

did in the “hello world” example in Chapter 2, where we used + instead of tf.add()).

Table 3-1 lists the available shortcuts.

Table 3-1. Common TensorFlow operations and their respective shortcuts

TensorFlow operator Shortcut Description

tf.add() a + b Adds a and b, element-wise.

tf.multiply() a * b Multiplies a and b, element-wise.

tf.subtract() a - b Subtracts a from b, element-wise.

tf.divide() a / b Computes Python-style division of a by b.

tf.pow() a ** b Returns the result of raising each element in a to its corresponding element b,

element-wise.

tf.mod() a % b Returns the element-wise modulo.

tf.logical_and() a & b Returns the truth table of a & b, element-wise. dtype must be tf.bool.

tf.greater() a > b Returns the truth table of a > b, element-wise.

tf.greater_equal() a >= b Returns the truth table of a >= b, element-wise.

tf.less_equal() a <= b Returns the truth table of a <= b, element-wise.

tf.less() a < b Returns the truth table of a < b, element-wise.

tf.negative() -a Returns the negative value of each element in a.

tf.logical_not() ~a Returns the logical NOT of each element in a. Only compatible with Tensor objects

with dtype of tf.bool.

tf.abs() abs(a) Returns the absolute value of each element in a.

tf.logical_or() a | b Returns the truth table of a | b, element-wise. dtype must be tf.bool.

Creating a Session and Running It

Once we are done describing the computation graph, we are ready to run the compu‐

tations that it represents. For this to happen, we need to create and run a session. We

do this by adding the following code:

sess = tf.Session()

outs = sess.run(f)

sess.close()

print("outs = {}".format(outs))

Out:

outs = 5

First, we launch the graph in a tf.Session. A Session object is the part of the Ten‐

sorFlow API that communicates between Python objects and data on our end, and

the actual computational system where memory is allocated for the objects we define,

intermediate variables are stored, and finally results are fetched for us.

sess = tf.Session()

26 | Chapter 3: Understanding TensorFlow Basics

The execution itself is then done with the .run() method of the Session

object. When called, this method completes one set of computations in our graph in

the following manner: it starts at the requested output(s) and then works backward,

computing nodes that must be executed according to the set of dependencies. There‐

fore, the part of the graph that will be computed depends on our output query.

In our example, we requested that node f be computed and got its value, 5, as output:

outs = sess.run(f)

When our computation task is completed, it is good practice to close the session

using the sess.close() command, making sure the resources used by our session are

freed up. This is an important practice to maintain even though we are not obligated

to do so for things to work:

sess.close()

Example 3-1. Try it yourself! Figure 3-3 shows another two graph examples. See if you

can produce these graphs yourself.

Figure 3-3. Can you create graphs A and B? (To produce the sine function, use tf.sin(x)).

Constructing and Managing Our Graph

As mentioned, as soon as we import TensorFlow, a default graph is automatically cre‐

ated for us. We can create additional graphs and control their association with some

given operations. tf.Graph() creates a new graph, represented as a TensorFlow

object. In this example we create another graph and assign it to the variable g:

Graphs, Sessions, and Fetches | 27

import tensorflow as tf

print(tf.get_default_graph())

g = tf.Graph()

print(g)

Out:

<tensorflow.python.framework.ops.Graph object at 0x7fd88c3c07d0>

<tensorflow.python.framework.ops.Graph object at 0x7fd88c3c03d0>

At this point we have two graphs: the default graph and the empty graph in g. Both

are revealed as TensorFlow objects when printed. Since g hasn’t been assigned as the

default graph, any operation we create will not be associated with it, but rather with

the default one.

We can check which graph is currently set as the default by using

tf.get_default_graph(). Also, for a given node, we can view the graph it’s associ‐

ated with by using the <node>.graph attribute:

g = tf.Graph()

a = tf.constant(5)

print(a.graph is g)

print(a.graph is tf.get_default_graph())

Out:

False

True

In this code example we see that the operation we’ve created is associated with the

default graph and not with the graph in g.

To make sure our constructed nodes are associated with the right graph we can con‐

struct them using a very useful Python construct: the with statement.

The with statement

The with statement is used to wrap the execution of a block with

methods defined by a context manager—an object that has the spe‐

cial method functions .__enter__() to set up a block of code

and .__exit__() to exit the block.

In layman’s terms, it’s very convenient in many cases to execute

some code that requires “setting up” of some kind (like opening a

file, SQL table, etc.) and then always “tearing it down” at the end,

regardless of whether the code ran well or raised any kind of excep‐

tion. In our case we use with to set up a graph and make sure every

piece of code will be performed in the context of that graph.

28 | Chapter 3: Understanding TensorFlow Basics

We use the with statement together with the as_default() command, which returns

a context manager that makes this graph the default one. This comes in handy when

working with multiple graphs:

g1 = tf.get_default_graph()

g2 = tf.Graph()

print(g1 is tf.get_default_graph())

with g2.as_default():

print(g1 is tf.get_default_graph())

print(g1 is tf.get_default_graph())

Out:

True

False

True

The with statement can also be used to start a session without having to explicitly

close it. This convenient trick will be used in the following examples.

Fetches

In our initial graph example, we request one specific node (node f) by passing the

variable it was assigned to as an argument to the sess.run() method. This argument

is called fetches, corresponding to the elements of the graph we wish to com‐

pute. We can also ask sess.run() for multiple nodes’ outputs simply by inputting a

list of requested nodes:

with tf.Session() as sess:

fetches = [a,b,c,d,e,f]

outs = sess.run(fetches)

print("outs = {}".format(outs))

print(type(outs[0]))

Out:

outs = [5, 2, 3, 10, 5, 5]

<type 'numpy.int32'>

We get back a list containing the outputs of the nodes according to how they were

ordered in the input list. The data in each item of the list is of type NumPy.

Graphs, Sessions, and Fetches | 29

NumPy

NumPy is a popular and useful Python package for numerical com‐

puting that offers many functionalities related to working with

arrays. We assume some basic familiarity with this package, and it

will not be covered in this book. TensorFlow and NumPy are

tightly coupled—for example, the output returned by sess.run()

is a NumPy array. In addition, many of TensorFlow’s operations

share the same syntax as functions in NumPy. To learn more about

NumPy, we refer the reader to Eli Bressert’s book SciPy and NumPy

(O’Reilly).

We mentioned that TensorFlow computes only the essential nodes according to the

set of dependencies. This is also manifested in our example: when we ask for the out‐

put of node d, only the outputs of nodes a and b are computed. Another example is

shown in Figure 3-1(B). This is a great advantage of TensorFlow—it doesn’t matter

how big and complicated our graph is as a whole, since we can run just a small por‐

tion of it as needed.

Automatically closing the session

Opening a session using the with clause will ensure the session is

automatically closed once all computations are done.

Flowing Tensors

In this section we will get a better understanding of how nodes and edges are actually

represented in TensorFlow, and how we can control their characteristics. To demon‐

strate how they work, we will focus on source operations, which are used to initialize

values.

Nodes Are Operations, Edges Are Tensor Objects

When we construct a node in the graph, like we did with tf.add(), we are actually

creating an operation instance. These operations do not produce actual values until

the graph is executed, but rather reference their to-be-computed result as a handle

that can be passed on—ow—to another node. These handles, which we can think of

as the edges in our graph, are referred to as Tensor objects, and this is where the

name TensorFlow originates from.

TensorFlow is designed such that first a skeleton graph is created with all of its com‐

ponents. At this point no actual data flows in it and no computations take place. It is

only upon execution, when we run the session, that data enters the graph and compu‐

30 | Chapter 3: Understanding TensorFlow Basics

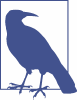

tations occur (as illustrated in Figure 3-4). This way, computations can be much more

efficient, taking the entire graph structure into consideration.

Figure 3-4. Illustrations of before (A) and aer (B) running a session. When the session

is run, actual data “ows” through the graph.

In the previous section’s example, tf.constant() created a node with the corre‐

sponding passed value. Printing the output of the constructor, we see that it’s actually

a Tensor object instance. These objects have methods and attributes that control their

behavior and that can be defined upon creation.

In this example, the variable c stores a Tensor object with the name Const_52:0, des‐

ignated to contain a 32-bit floating-point scalar:

c = tf.constant(4.0)

print(c)

Out:

Tensor("Const_52:0", shape=(), dtype=float32)

A note on constructors

The tf.<operator> function could be thought of as a constructor,

but to be more precise, this is actually not a constructor at all, but

rather a factory method that sometimes does quite a bit more than

just creating the operator objects.

Setting attributes with source operations

Each Tensor object in TensorFlow has attributes such as name, shape, and dtype that

help identify and set the characteristics of that object. These attributes are optional

Flowing Tensors | 31

when creating a node, and are set automatically by TensorFlow when missing. In the

next section we will take a look at these attributes. We will do so by looking at Tensor

objects created by ops known as source operations. Source operations are operations

that create data, usually without using any previously processed inputs. With these

operations we can create scalars, as we already encountered with the tf.constant()

method, as well as arrays and other types of data.

Data Types

The basic units of data that pass through a graph are numerical, Boolean, or string

elements. When we print out the Tensor object c from our last code example, we see

that its data type is a floating-point number. Since we didn’t specify the type of data,

TensorFlow inferred it automatically. For example 5 is regarded as an integer, while

anything with a decimal point, like 5.1, is regarded as a floating-point number.

We can explicitly choose what data type we want to work with by specifying it when

we create the Tensor object. We can see what type of data was set for a given Tensor

object by using the attribute dtype:

c = tf.constant(4.0, dtype=tf.float64)

print(c)

print(c.dtype)

Out:

Tensor("Const_10:0", shape=(), dtype=float64)

<dtype: 'float64'>

Explicitly asking for (appropriately sized) integers is on the one hand more memory

conserving, but on the other may result in reduced accuracy as a consequence of not

tracking digits after the decimal point.

Casting

It is important to make sure our data types match throughout the graph—performing

an operation with two nonmatching data types will result in an exception. To change

the data type setting of a Tensor object, we can use the tf.cast() operation, passing

the relevant Tensor and the new data type of interest as the first and second argu‐

ments, respectively:

x = tf.constant([1,2,3],name='x',dtype=tf.float32)

print(x.dtype)

x = tf.cast(x,tf.int64)

print(x.dtype)

Out:

<dtype: 'float32'>

<dtype: 'int64'>

32 | Chapter 3: Understanding TensorFlow Basics

TensorFlow supports many data types. These are listed in Table 3-2.

Table 3-2. Supported Tensor data types

Data type Python type Description

DT_FLOAT tf.float32 32-bit oating point.

DT_DOUBLE tf.float64 64-bit oating point.

DT_INT8 tf.int8 8-bit signed integer.

DT_INT16 tf.int16 16-bit signed integer.

DT_INT32 tf.int32 32-bit signed integer.

DT_INT64 tf.int64 64-bit signed integer.

DT_UINT8 tf.uint8 8-bit unsigned integer.

DT_UINT16 tf.uint16 16-bit unsigned integer.

DT_STRING tf.string Variable-length byte array. Each element of a Tensor is a byte array.

DT_BOOL tf.bool Boolean.

DT_COMPLEX64 tf.complex64 Complex number made of two 32-bit oating points: real and imaginary parts.

DT_COMPLEX128 tf.complex128 Complex number made of two 64-bit oating points: real and imaginary parts.

DT_QINT8 tf.qint8 8-bit signed integer used in quantized ops.

DT_QINT32 tf.qint32 32-bit signed integer used in quantized ops.

DT_QUINT8 tf.quint8 8-bit unsigned integer used in quantized ops.

Tensor Arrays and Shapes

A source of potential confusion is that two different things are referred to by the

name, Tensor. As used in the previous sections, Tensor is the name of an object used

in the Python API as a handle for the result of an operation in the graph. However,

tensor is also a mathematical term for n-dimensional arrays. For example, a 1×1 ten‐

sor is a scalar, a 1×n tensor is a vector, an n×n tensor is a matrix, and an n×n×n tensor

is just a three-dimensional array. This, of course, generalizes to any dimension. Ten‐

sorFlow regards all the data units that flow in the graph as tensors, whether they are

multidimensional arrays, vectors, matrices, or scalars. The TensorFlow objects called

Tensors are named after these mathematical tensors.

To clarify the distinction between the two, from now on we will refer to the former as

Tensors with a capital T and the latter as tensors with a lowercase t.

As with dtype, unless stated explicitly, TensorFlow automatically infers the shape of

the data. When we printed out the Tensor object at the beginning of this section, it

showed that its shape was (), corresponding to the shape of a scalar.

Using scalars is good for demonstration purposes, but most of the time it’s much

more practical to work with multidimensional arrays. To initialize high-dimensional

arrays, we can use Python lists or NumPy arrays as inputs. In the following example,

Flowing Tensors | 33

we use as inputs a 2×3 matrix using a Python list and then a 3D NumPy array of size

2×2×3 (two matrices of size 2×3):

import numpy as np

c = tf.constant([[1,2,3],

[4,5,6]])

print("Python List input: {}".format(c.get_shape()))

c = tf.constant(np.array([

[[1,2,3],

[4,5,6]],

[[1,1,1],

[2,2,2]]

]))

print("3d NumPy array input: {}".format(c.get_shape()))

Out:

Python list input: (2, 3)

3d NumPy array input: (2, 2, 3)

The get_shape() method returns the shape of the tensor as a tuple of integers. The

number of integers corresponds to the number of dimensions of the tensor, and each

integer is the number of array entries along that dimension. For example, a shape of

(2,3) indicates a matrix, since it has two integers, and the size of the matrix is 2×3.

Other types of source operation constructors are very useful for initializing constants

in TensorFlow, like filling a constant value, generating random numbers, and creating

sequences.

Random-number generators have special importance as they are used in many cases

to create the initial values for TensorFlow Variables, which will be introduced

shortly. For example, we can generate random numbers from a normal distribution

using tf.random.normal(), passing the shape, mean, and standard deviation as the

first, second, and third arguments, respectively. Another two examples for useful ran‐

dom initializers are the truncated normal that, as its name implies, cuts off all values

below and above two standard deviations from the mean, and the uniform initializer

that samples values uniformly within some interval [a,b).

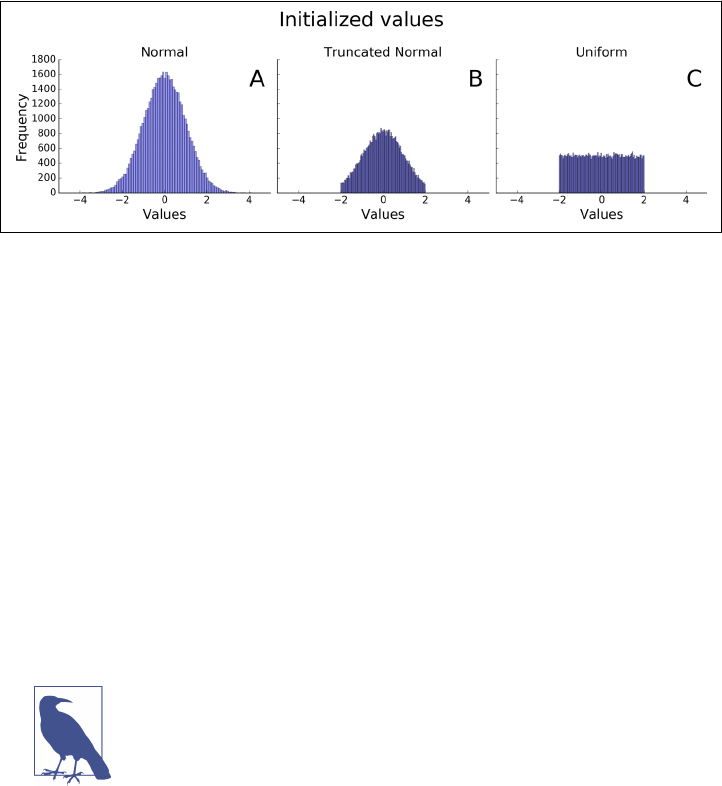

Examples of sampled values for each of these methods are shown in Figure 3-5.

34 | Chapter 3: Understanding TensorFlow Basics

Figure 3-5. 50,000 random samples generated from (A) standard normal distribution,

(B) truncated normal, and (C) uniform [–2,2).

Those who are familiar with NumPy will recognize some of the initializers, as they

share the same syntax. One example is the sequence generator tf.linspace(a, b,

n) that creates n evenly spaced values from a to b.

A feature that is convenient to use when we want to explore the data content of an

object is tf.InteractiveSession(). Using it and the .eval() method, we can get a

full look at the values without the need to constantly refer to the session object:

sess = tf.InteractiveSession()

c = tf.linspace(0.0, 4.0, 5)

print("The content of 'c':\n {}\n".format(c.eval()))

sess.close()

Out:

The content of 'c':

[ 0. 1. 2. 3. 4.]

Interactive sessions

tf.InteractiveSession() allows you to replace the usual tf.Ses

sion(), so that you don’t need a variable holding the session for

running ops. This can be useful in interactive Python environ‐

ments, like when writing IPython notebooks, for instance.

We’ve mentioned only a few of the available source operations. Table 3-2 provides

short descriptions of more useful initializers.

Flowing Tensors | 35

TensorFlow operation Description

tf.constant(value)Creates a tensor populated with the value or values specied by the argument value

tf.fill(shape, value)Creates a tensor of shape shape and lls it with value

tf.zeros(shape)Returns a tensor of shape shape with all elements set to 0

tf.zeros_like(tensor)Returns a tensor of the same type and shape as tensor with all elements set to 0

tf.ones(shape)Returns a tensor of shape shape with all elements set to 1

tf.ones_like(tensor)Returns a tensor of the same type and shape as tensor with all elements set to 1

tf.random_normal(shape,

mean, stddev)

Outputs random values from a normal distribution

tf.truncated_nor

mal(shape, mean,

stddev)

Outputs random values from a truncated normal distribution (values whose magnitude

is more than two standard deviations from the mean are dropped and re-picked)

tf.random_uni

form(shape, minval,

maxval)

Generates values from a uniform distribution in the range [minval, maxval)

tf.random_shuffle(ten

sor)

Randomly shues a tensor along its rst dimension

Matrix multiplication

This very useful arithmetic operation is performed in TensorFlow via the tf.mat

mul(A,B) function for two Tensor objects A and B.

Say we have a Tensor storing a matrix A and another storing a vector x, and we wish

to compute the matrix product of the two:

Ax = b

Before using matmul(), we need to make sure both have the same number of dimen‐

sions and that they are aligned correctly with respect to the intended multiplication.

In the following example, a matrix A and a vector x are created:

A = tf.constant([ [1,2,3],

[4,5,6] ])

print(a.get_shape())

x = tf.constant([1,0,1])

print(x.get_shape())

Out:

(2, 3)

(3,)

In order to multiply them, we need to add a dimension to x, transforming it from a

1D vector to a 2D single-column matrix.

36 | Chapter 3: Understanding TensorFlow Basics

We can add another dimension by passing the Tensor to tf.expand_dims(), together

with the position of the added dimension as the second argument. By adding another

dimension in the second position (index 1), we get the desired outcome:

x = tf.expand_dims(x,1)

print(x.get_shape())

b = tf.matmul(A,x)

sess = tf.InteractiveSession()

print('matmul result:\n {}'.format(b.eval()))

sess.close()

Out:

(3, 1)

matmul result:

[[ 4]

[10]]

If we want to flip an array, for example turning a column vector into a row vector or

vice versa, we can use the tf.transpose() function.

Names

Each Tensor object also has an identifying name. This name is an intrinsic string

name, not to be confused with the name of the variable. As with dtype, we can use

the .name attribute to see the name of the object:

with tf.Graph().as_default():

c1 = tf.constant(4,dtype=tf.float64,name='c')

c2 = tf.constant(4,dtype=tf.int32,name='c')

print(c1.name)

print(c2.name)

Out:

c:0

c_1:0

The name of the Tensor object is simply the name of its corresponding operation (“c”;

concatenated with a colon), followed by the index of that tensor in the outputs of the

operation that produced it—it is possible to have more than one.

Flowing Tensors | 37

Duplicate names

Objects residing within the same graph cannot have the same name

—TensorFlow forbids it. As a consequence, it will automatically

add an underscore and a number to distinguish the two. Of course,

both objects can have the same name when they are associated with

different graphs.

Name scopes

Sometimes when dealing with a large, complicated graph, we would like to create

some node grouping to make it easier to follow and manage. For that we can hier‐

archically group nodes together by name. We do so by using tf.name_scope("pre

fix") together with the useful with clause again:

with tf.Graph().as_default():

c1 = tf.constant(4,dtype=tf.float64,name='c')

with tf.name_scope("prefix_name"):

c2 = tf.constant(4,dtype=tf.int32,name='c')

c3 = tf.constant(4,dtype=tf.float64,name='c')

print(c1.name)

print(c2.name)

print(c3.name)

Out:

c:0

prefix_name/c:0

prefix_name/c_1:0

In this example we’ve grouped objects contained in variables c2 and c3 under the