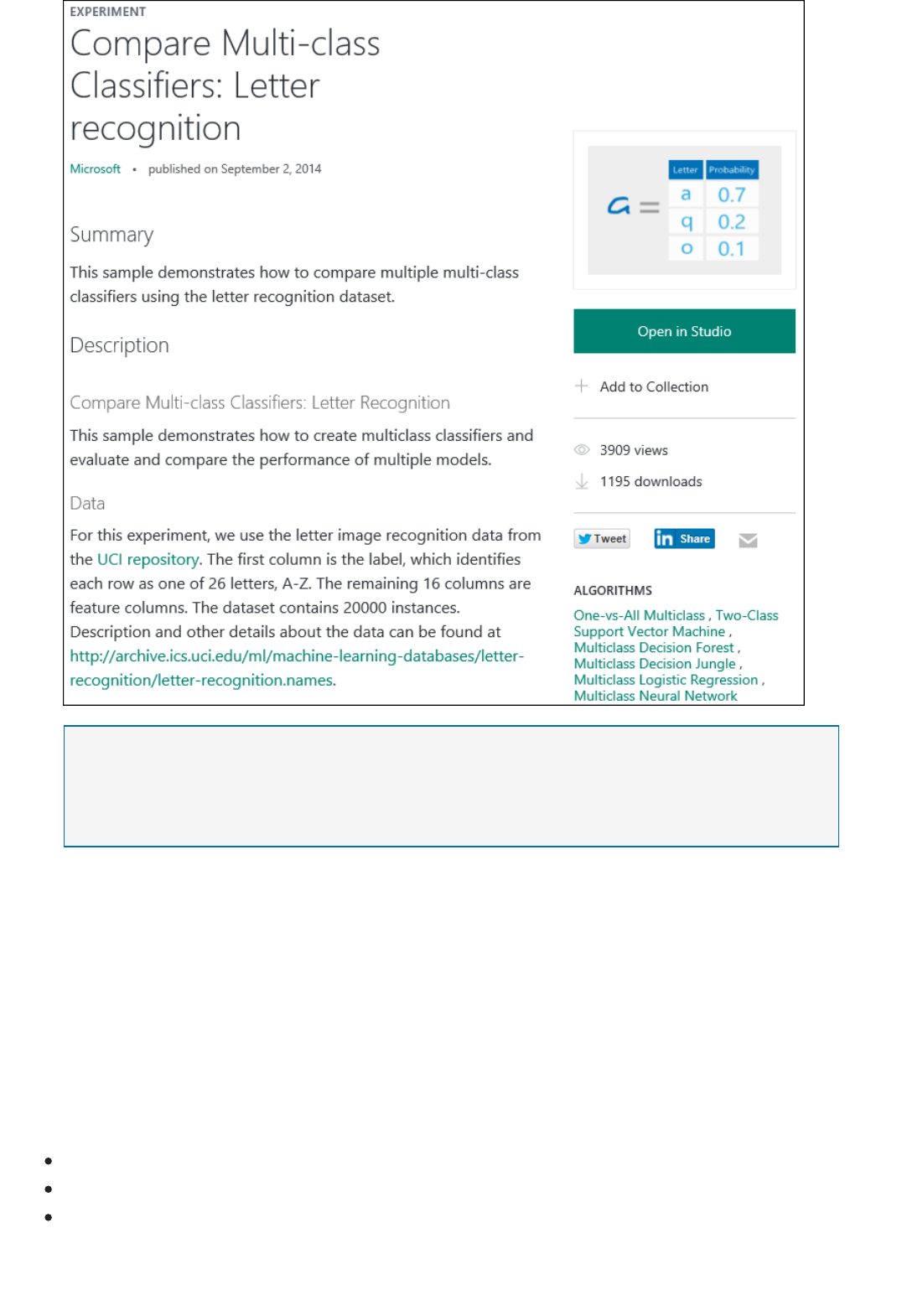

What Is Azure Machine Learning Studio? | Microsoft Docs Manual Azura

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 421 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover Page

- Machine Learning Studio Documentation

- Overview

- Get Started

- How To

- Set up tools and utilities

- Acquire and understand data

- Develop models

- Operationalize models

- Examples

- Reference

- Related

- Resources

Table of ContentsTable of Contents

Machine Learning Studio Documentation

Overview

Machine Learning Studio

ML Studio capabilities

ML Studio basics (infographic)

Frequently asked questions

What's new?

Get Started

Create your first experiment

Example walkthrough

Create a predictive solution

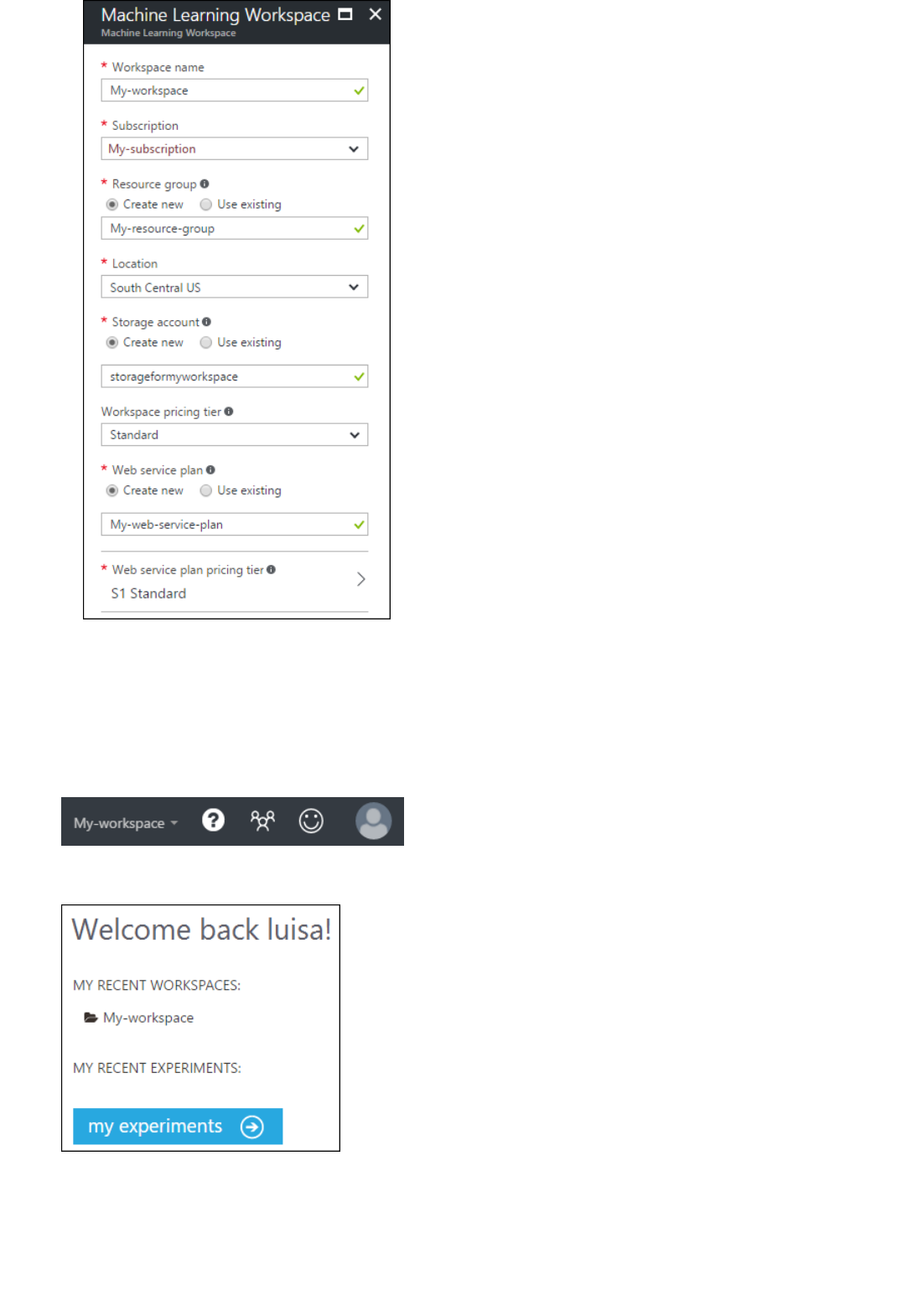

1 - Create a workspace

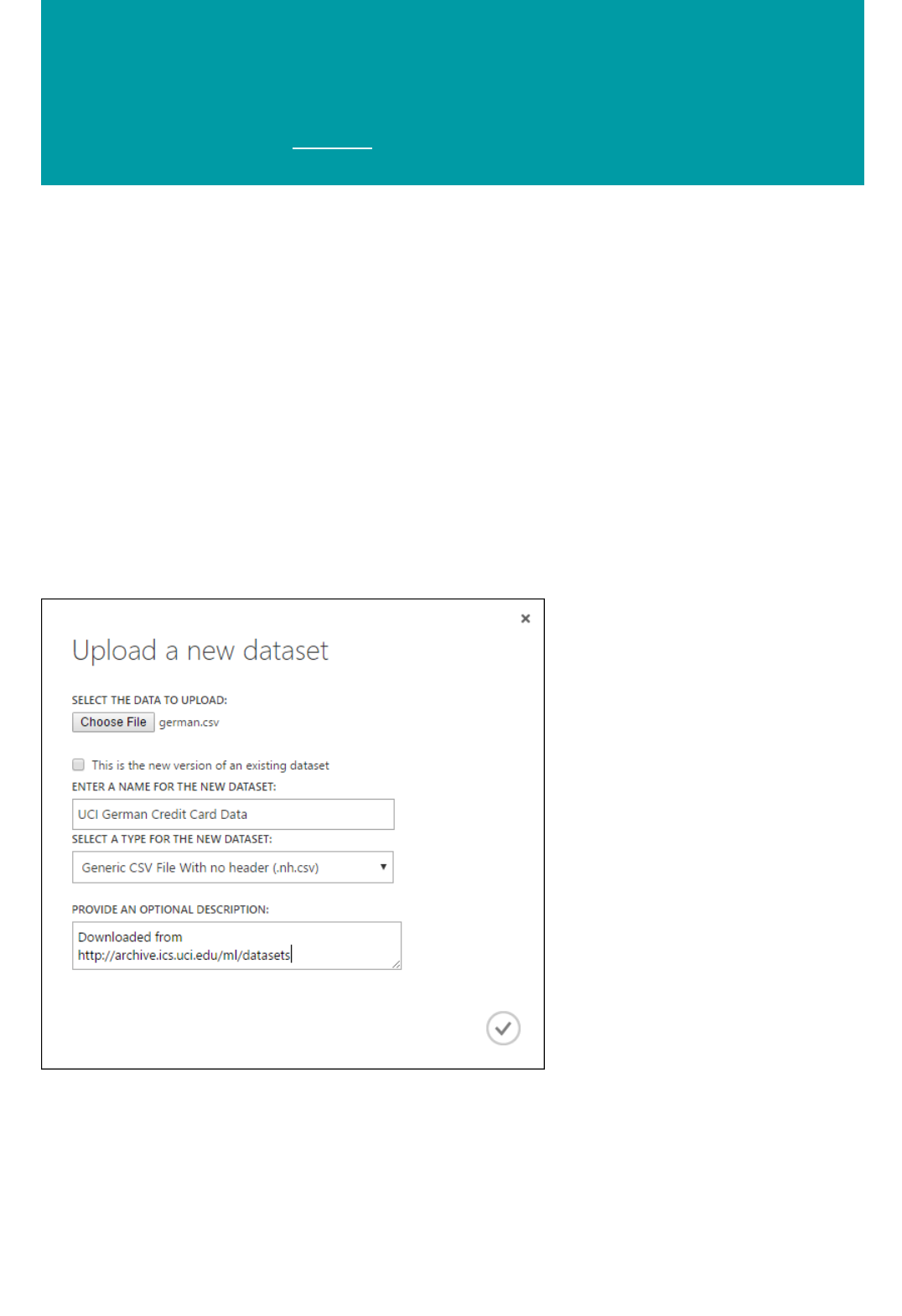

2 - Upload data

3 - Create experiment

4 - Train and evaluate

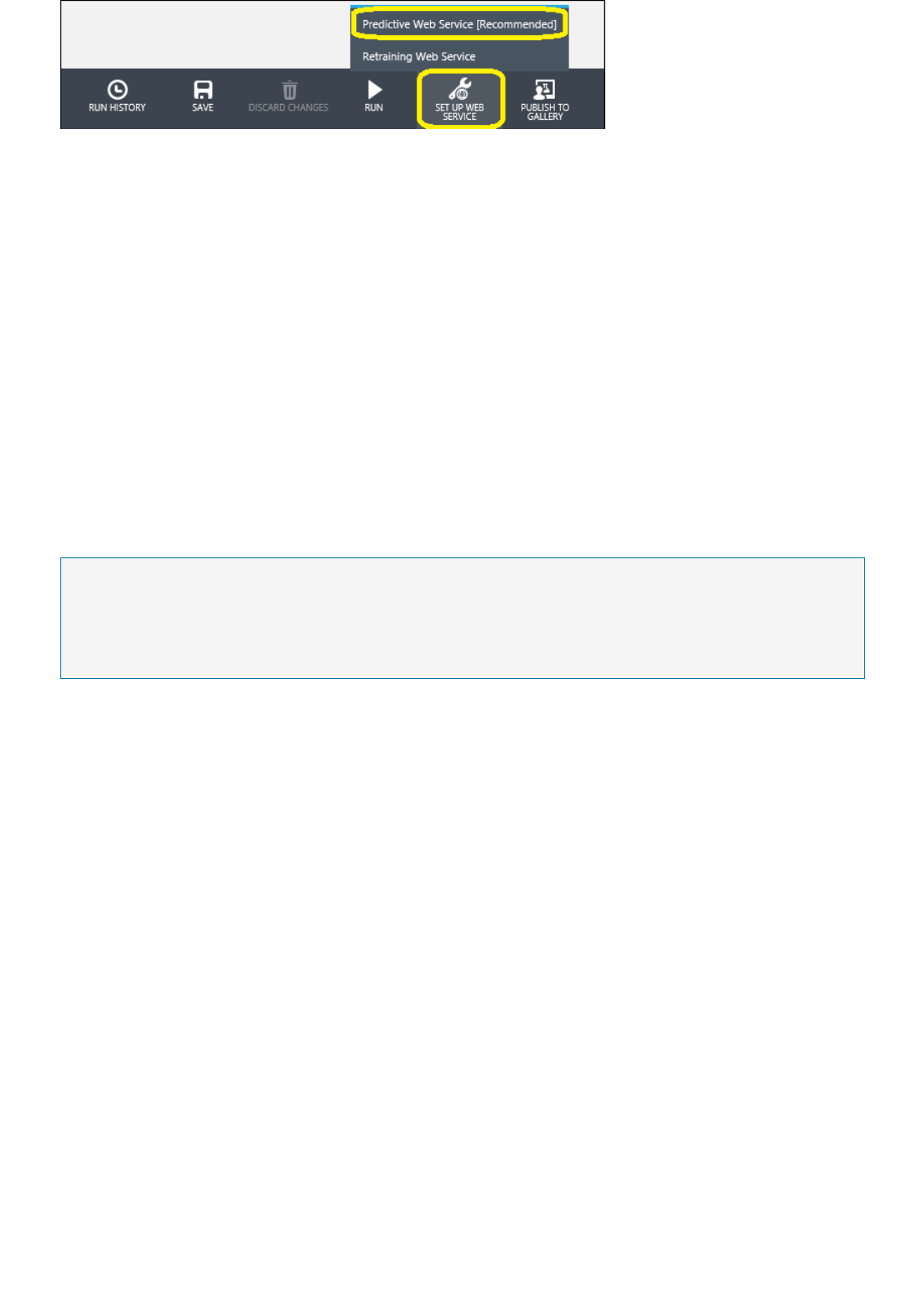

5 - Deploy web service

6 - Access web service

Data Science for Beginners

1 - Five questions

2 - Is your data ready?

3 - Ask the right question

4 - Predict an answer

5 - Copy other people's work

R quick start

How To

Set up tools and utilities

Manage a workspace

Acquire and understand data

Import training data

Develop models

Create and train models

Operationalize models

Overview

Deploy models

Manage web services

Retrain models

Consume models

Examples

Sample experiments

Sample datasets

Customer churn example

Reference

Azure PowerShell module (New)

Azure PowerShell module (Classic)

Algorithm & Module reference

REST management APIs

Web service error codes

Related

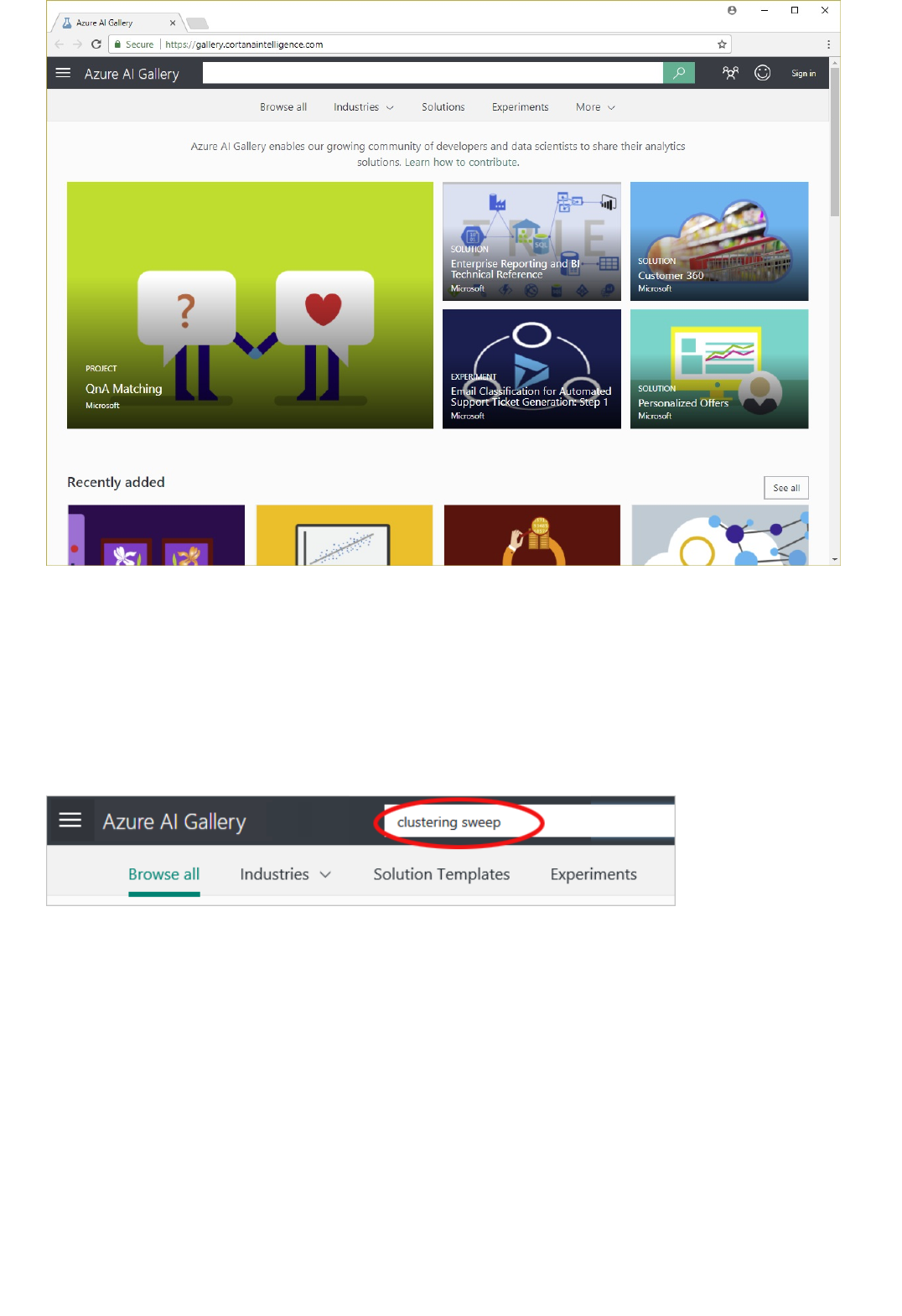

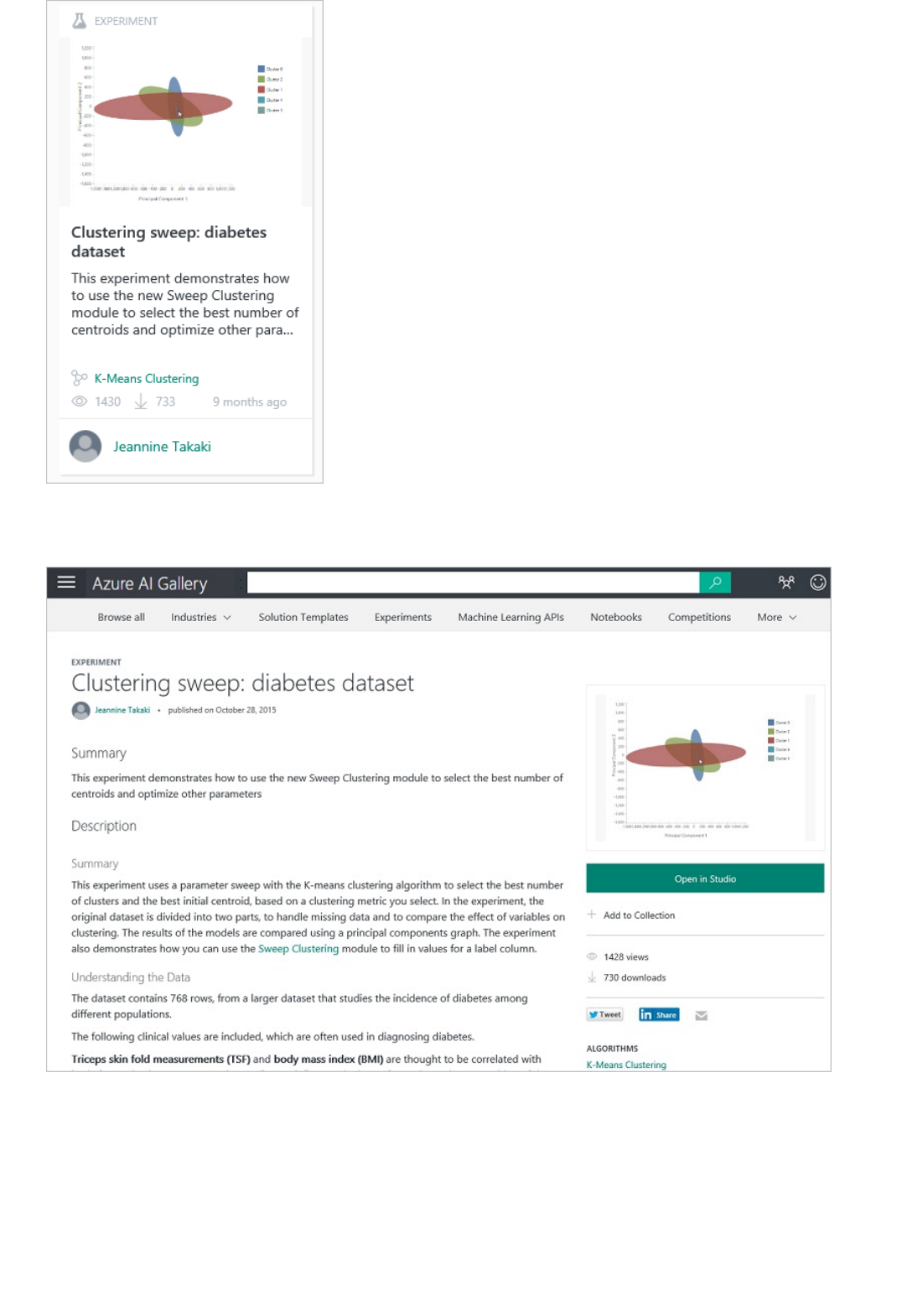

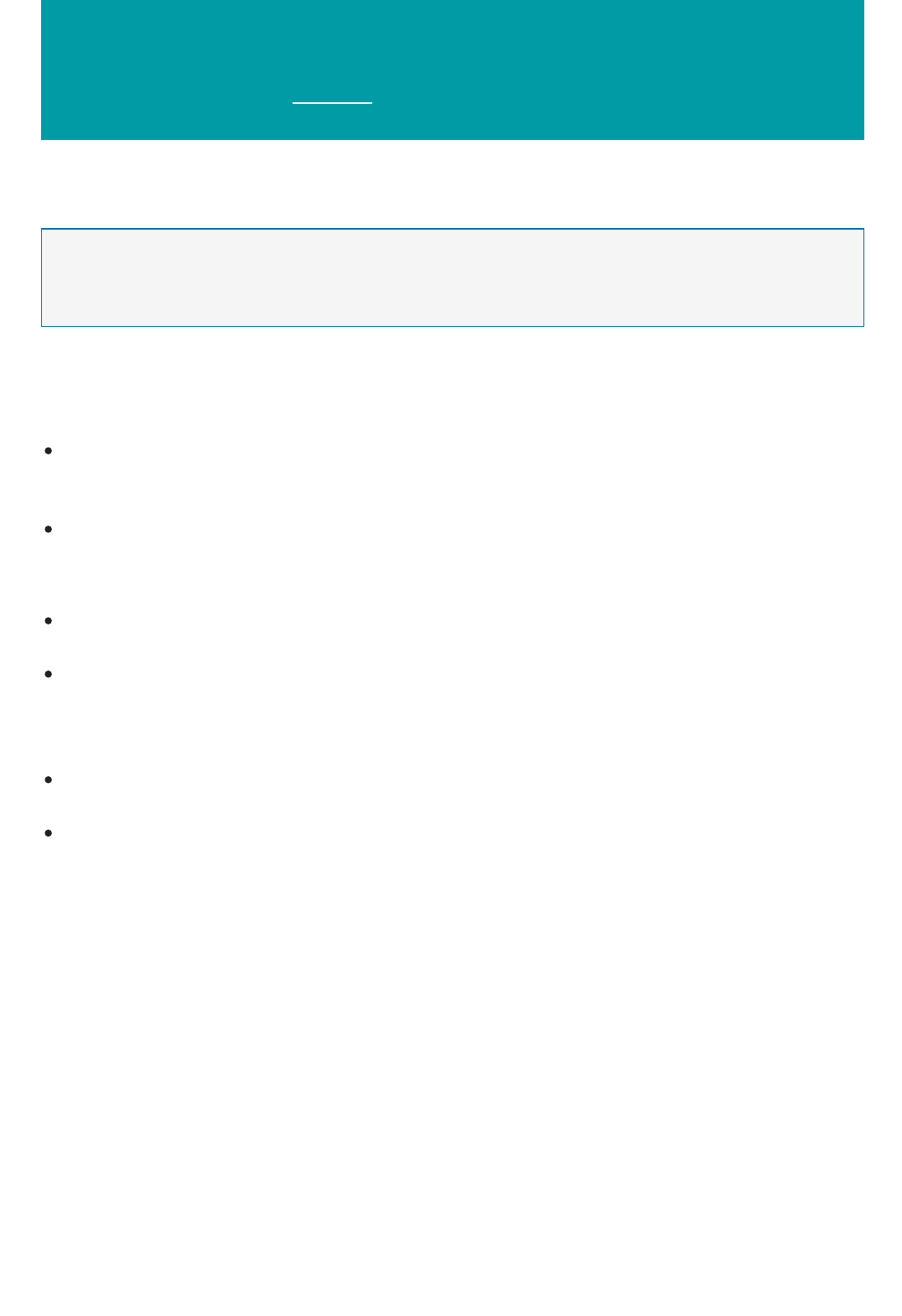

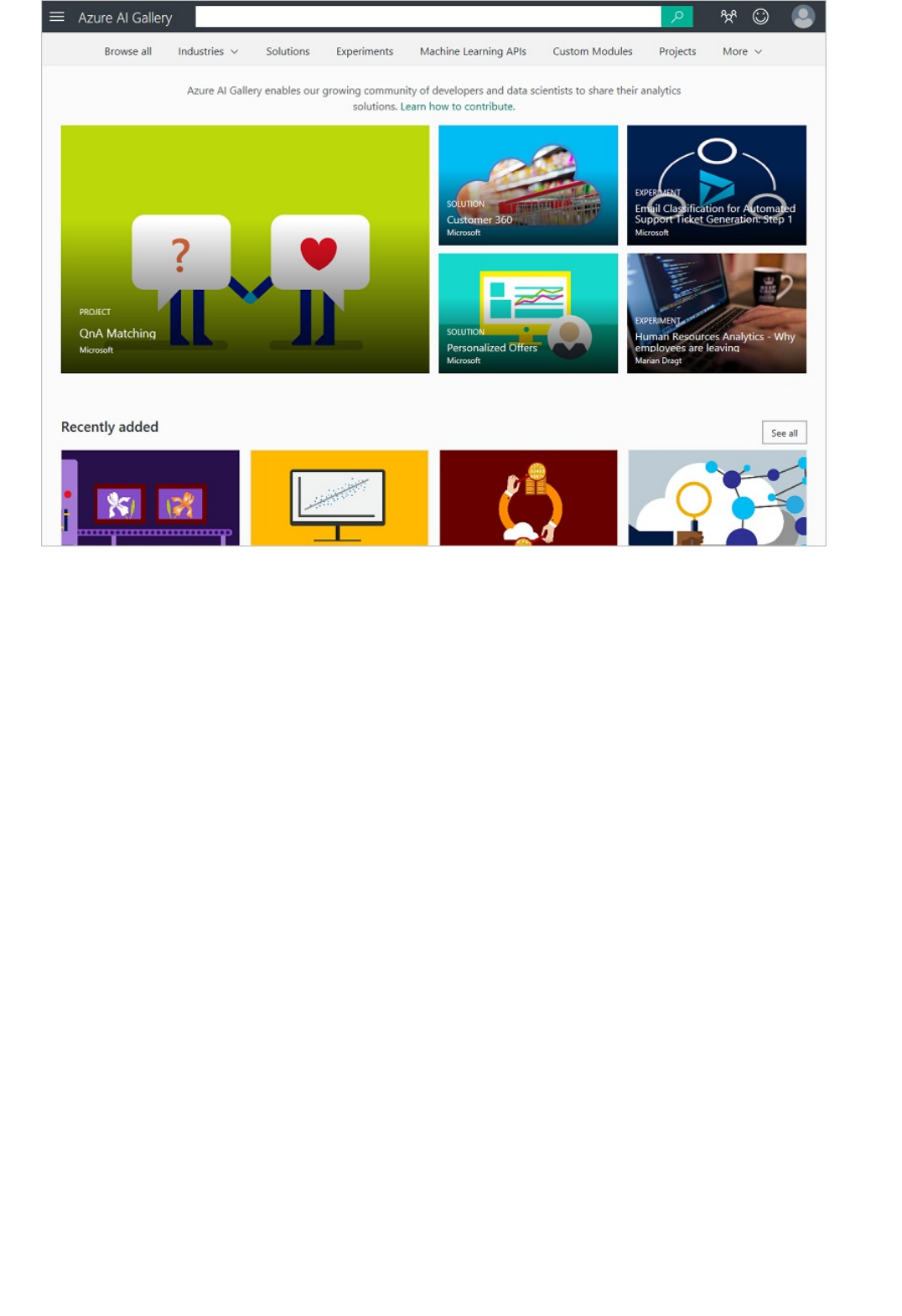

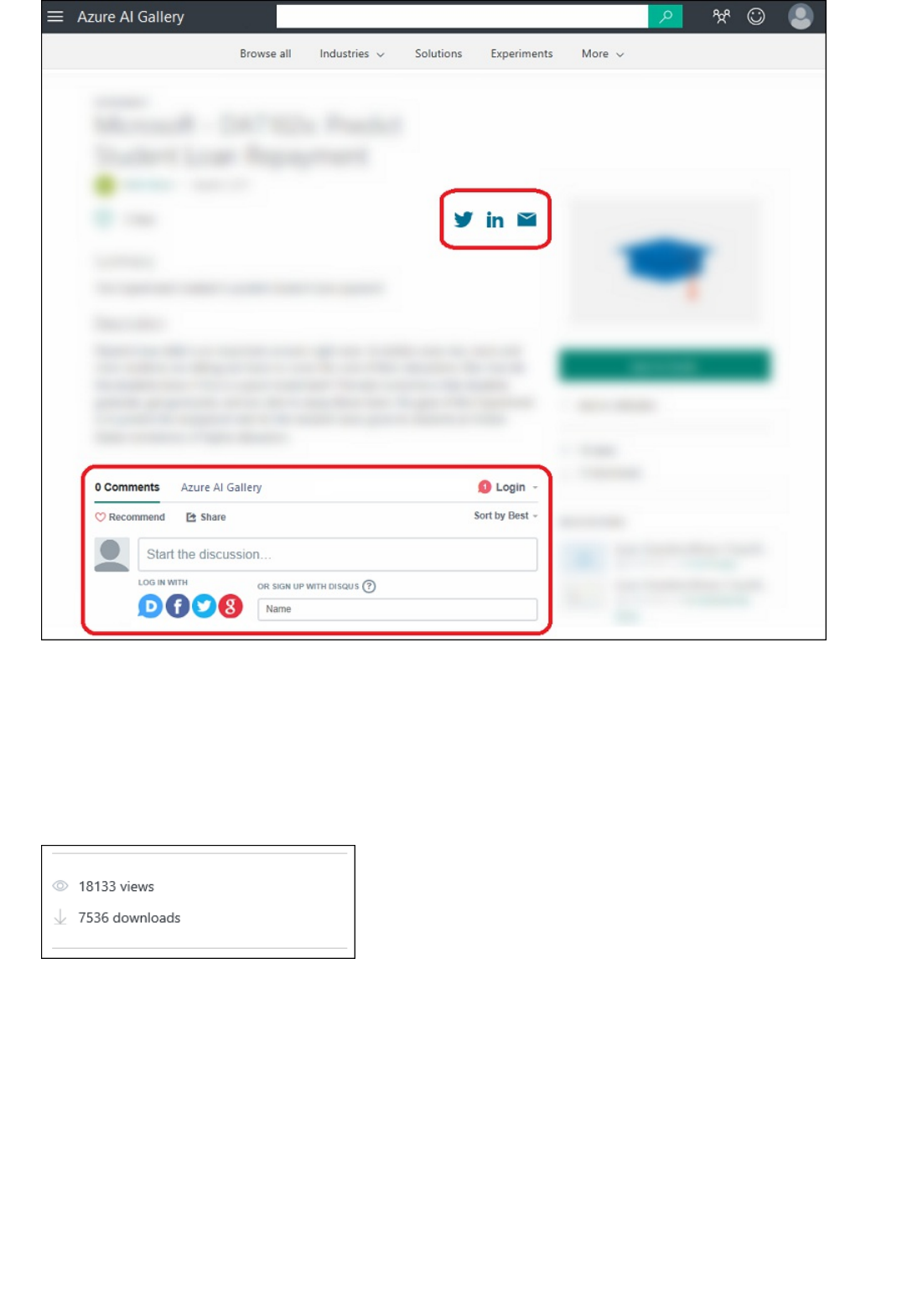

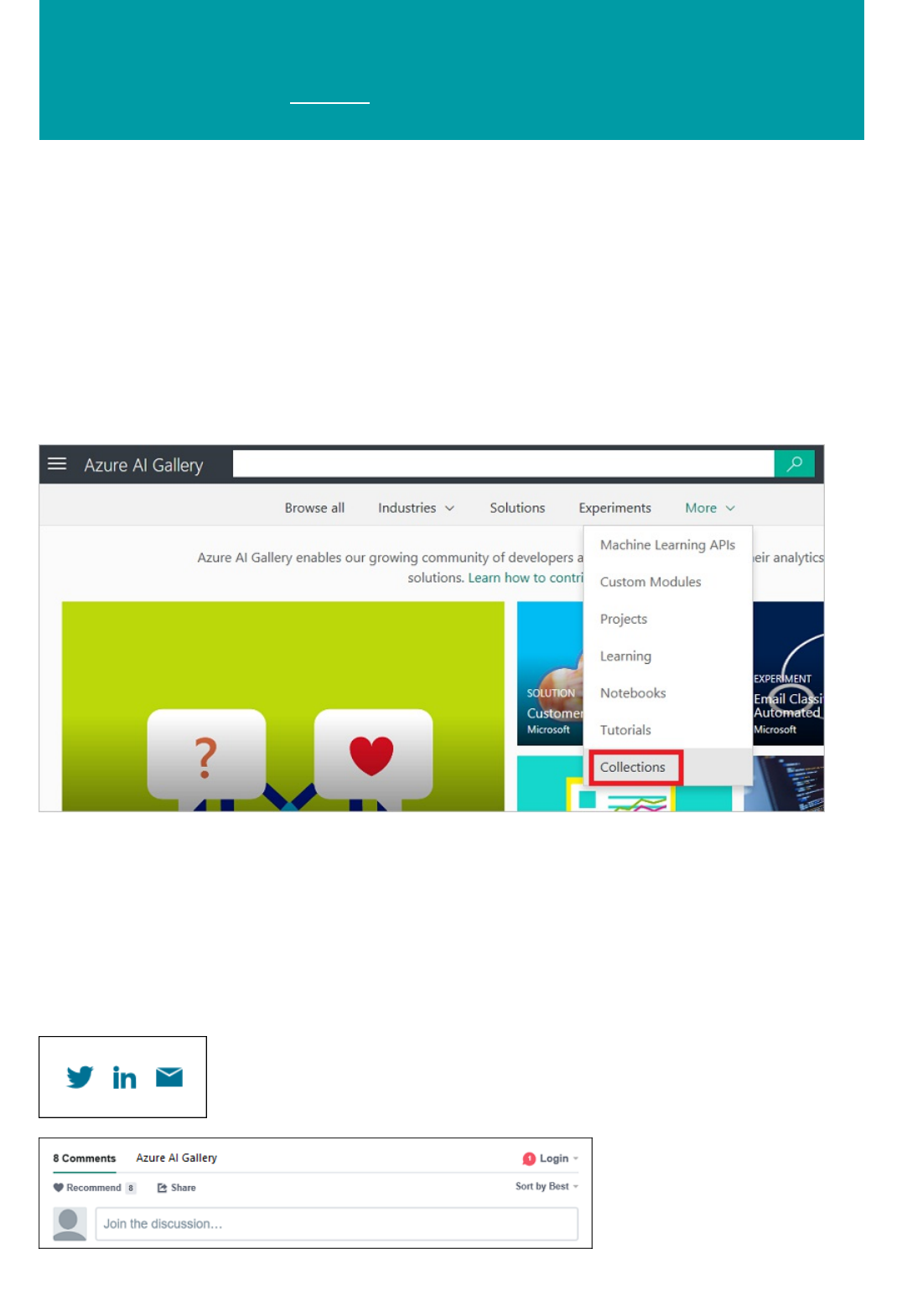

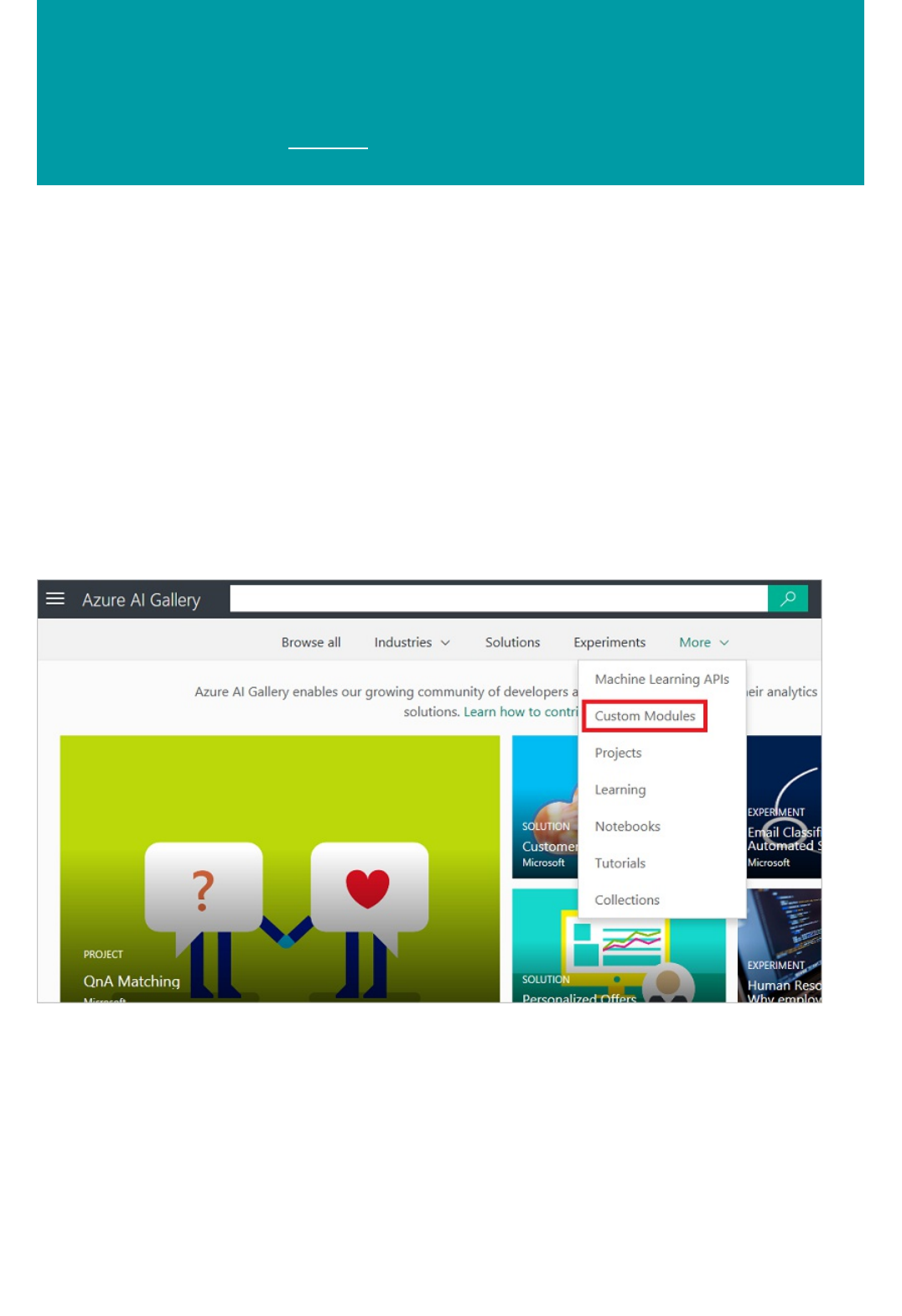

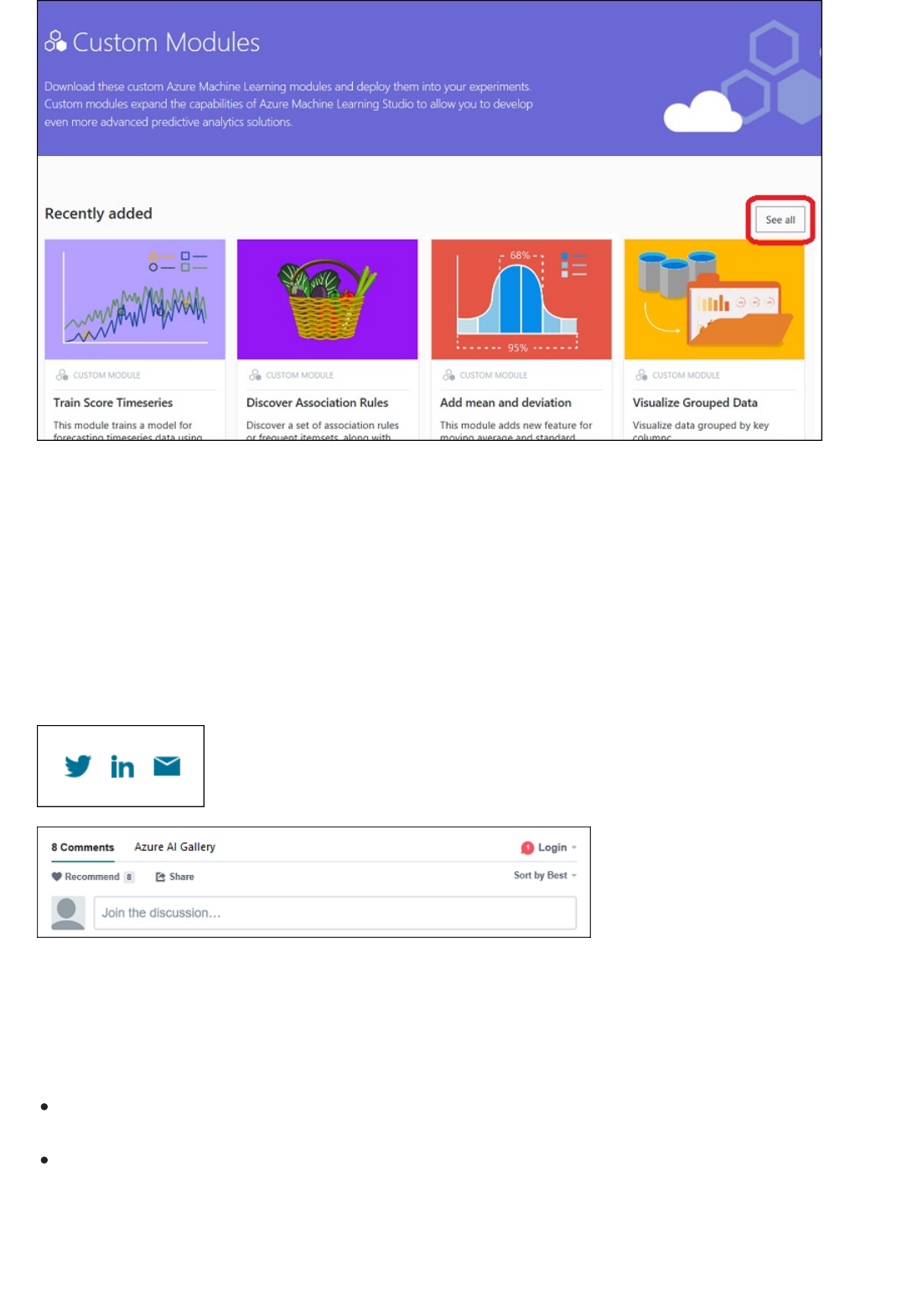

Azure AI Gallery

Overview

Industries

Solutions

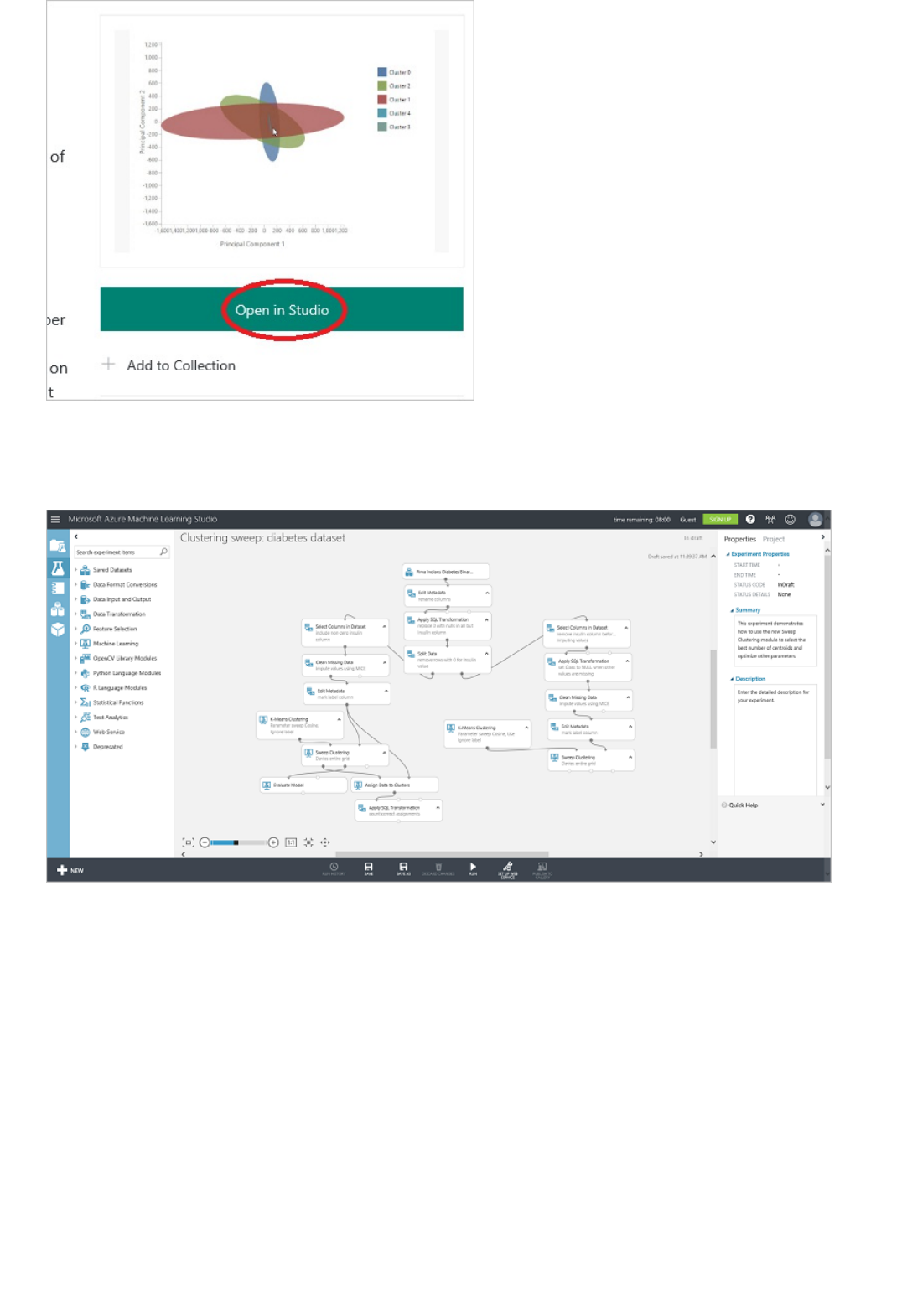

Experiments

Jupyter Notebooks

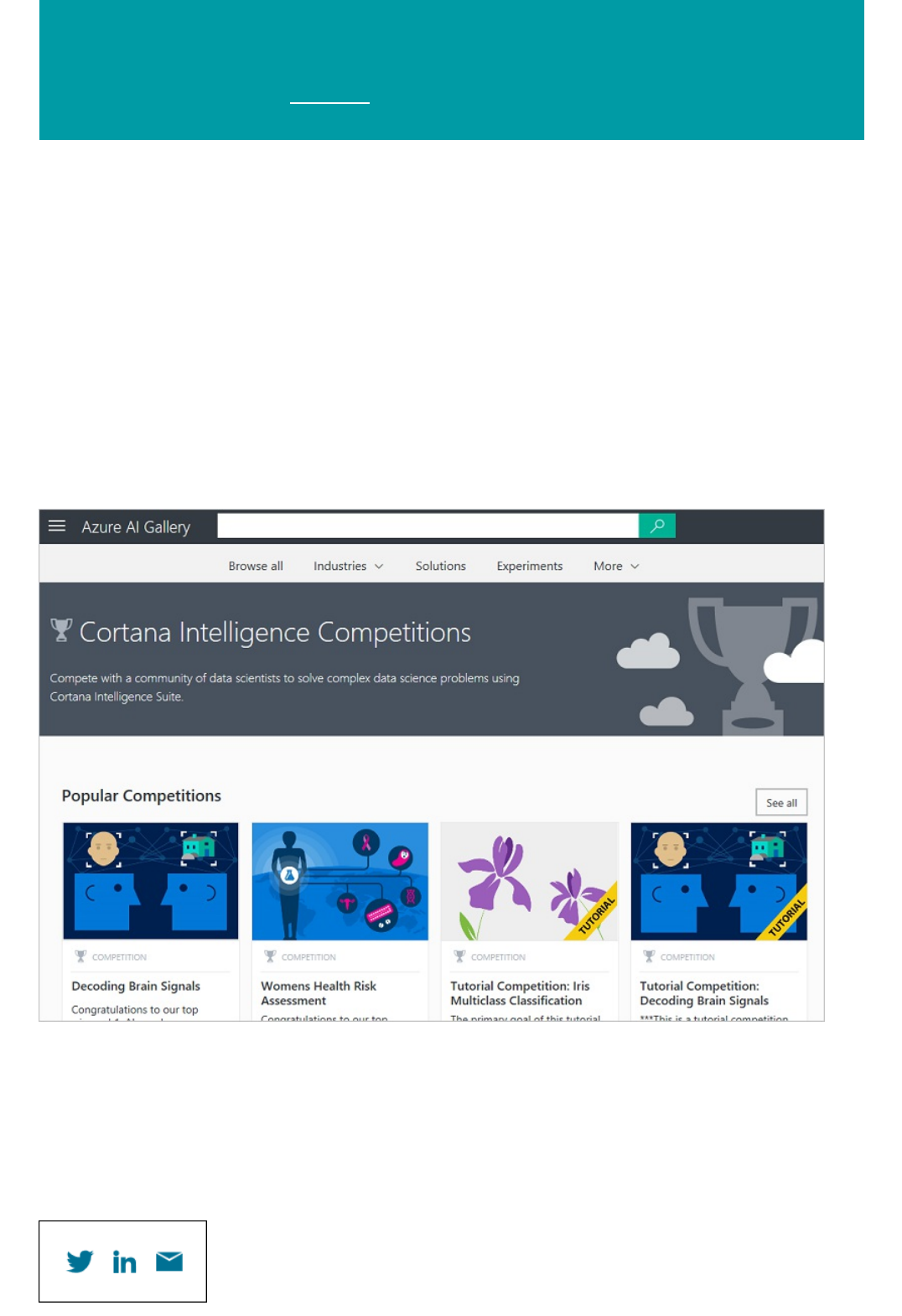

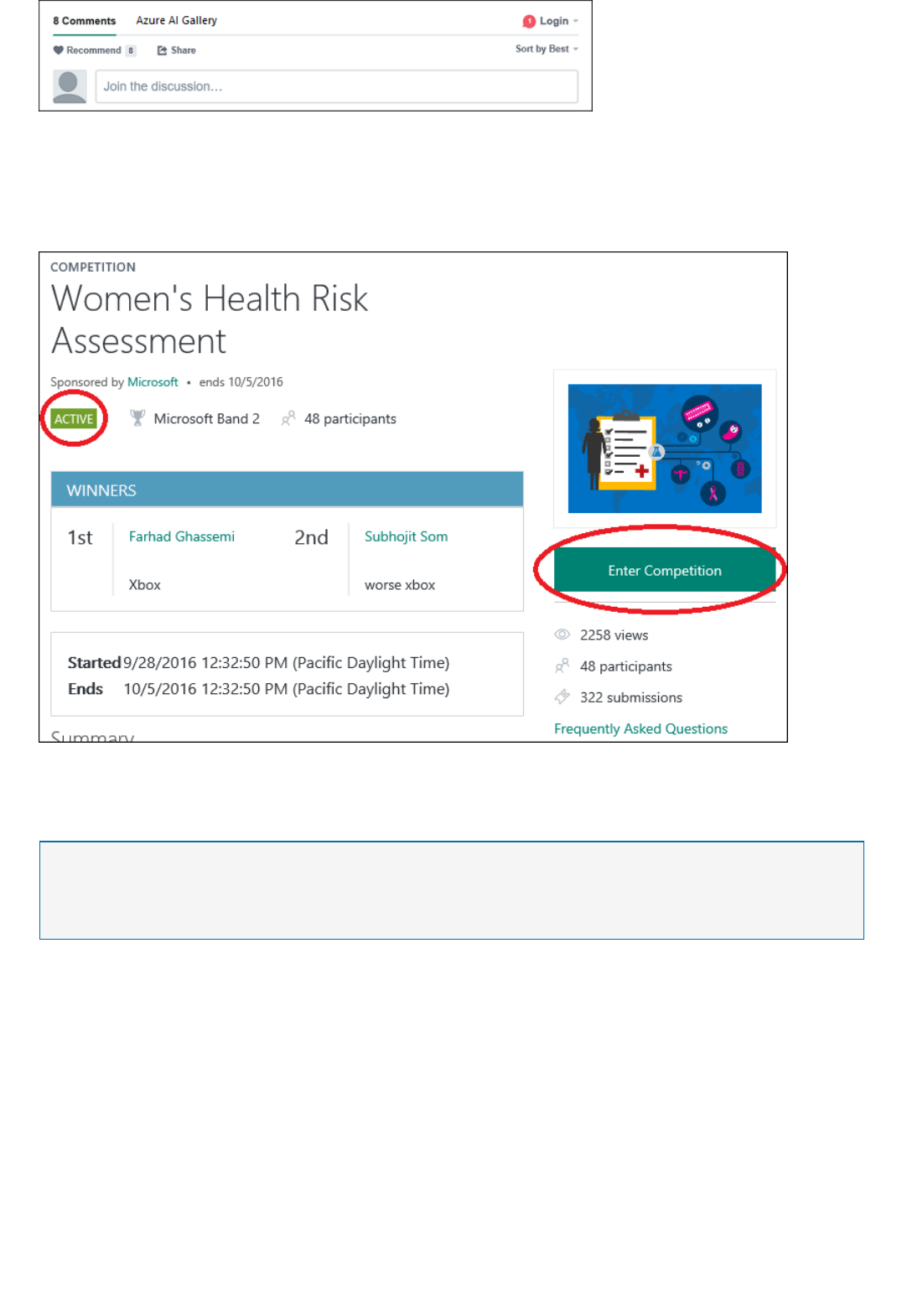

Competitions

Competitions FAQ

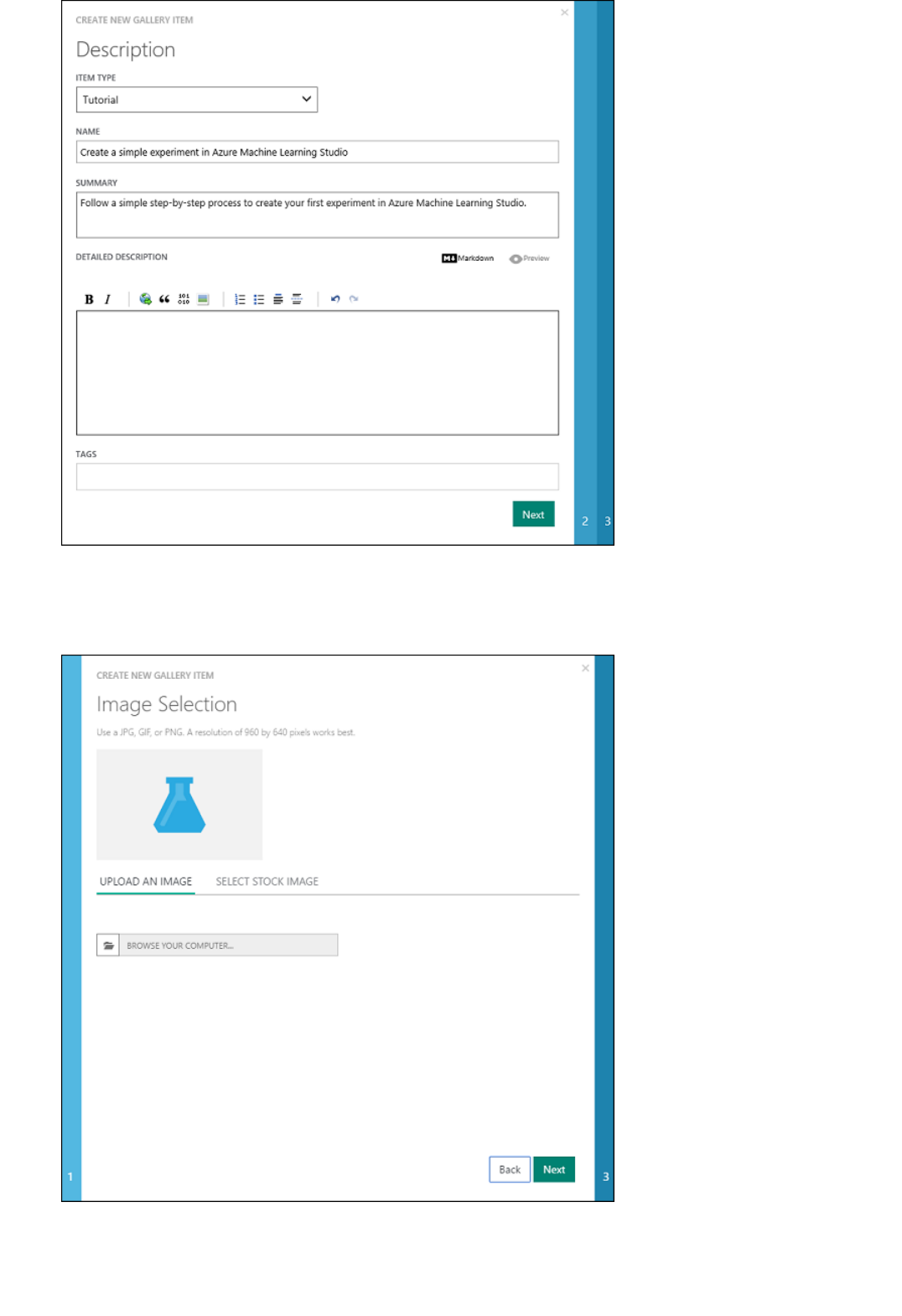

Tutorials

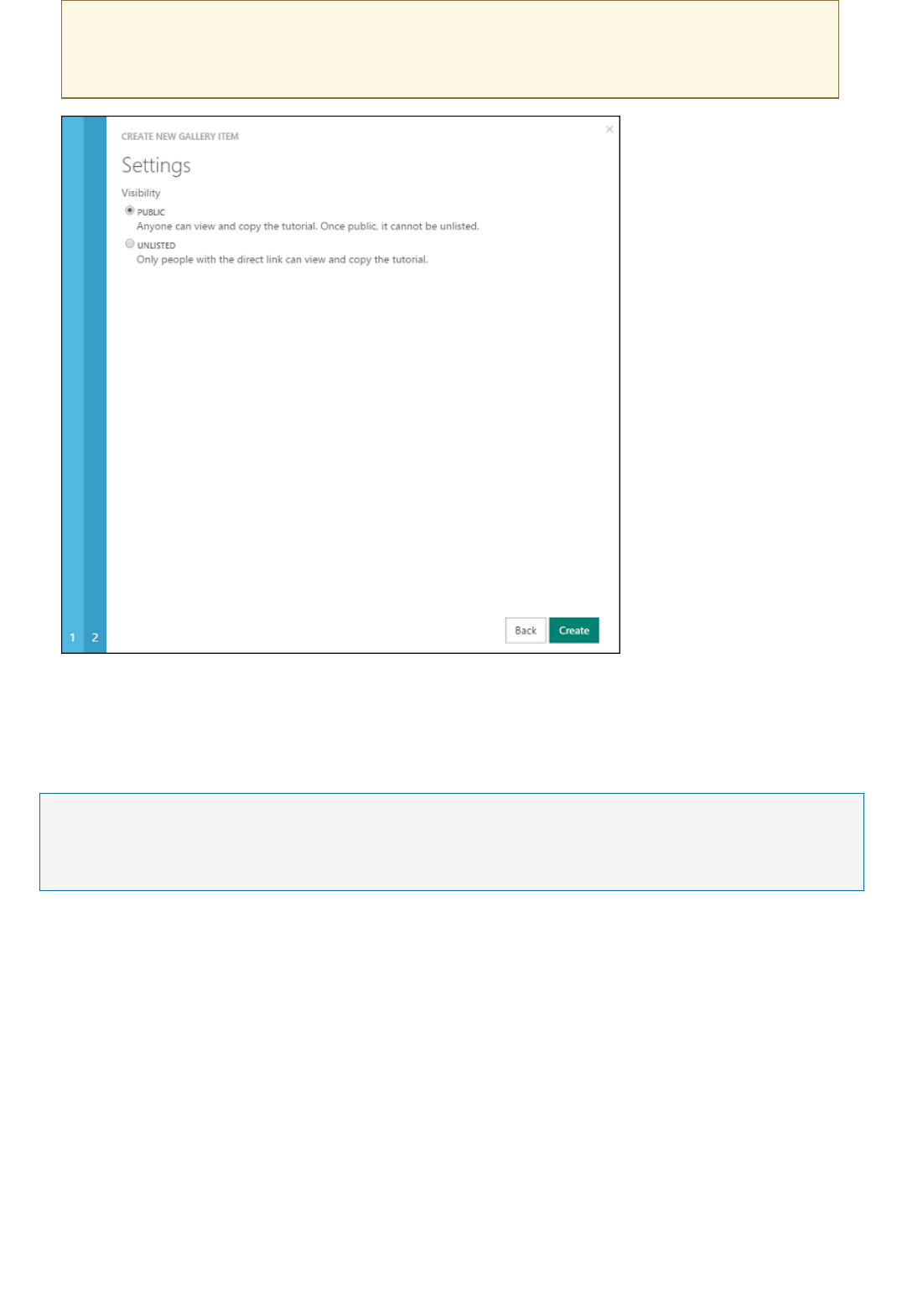

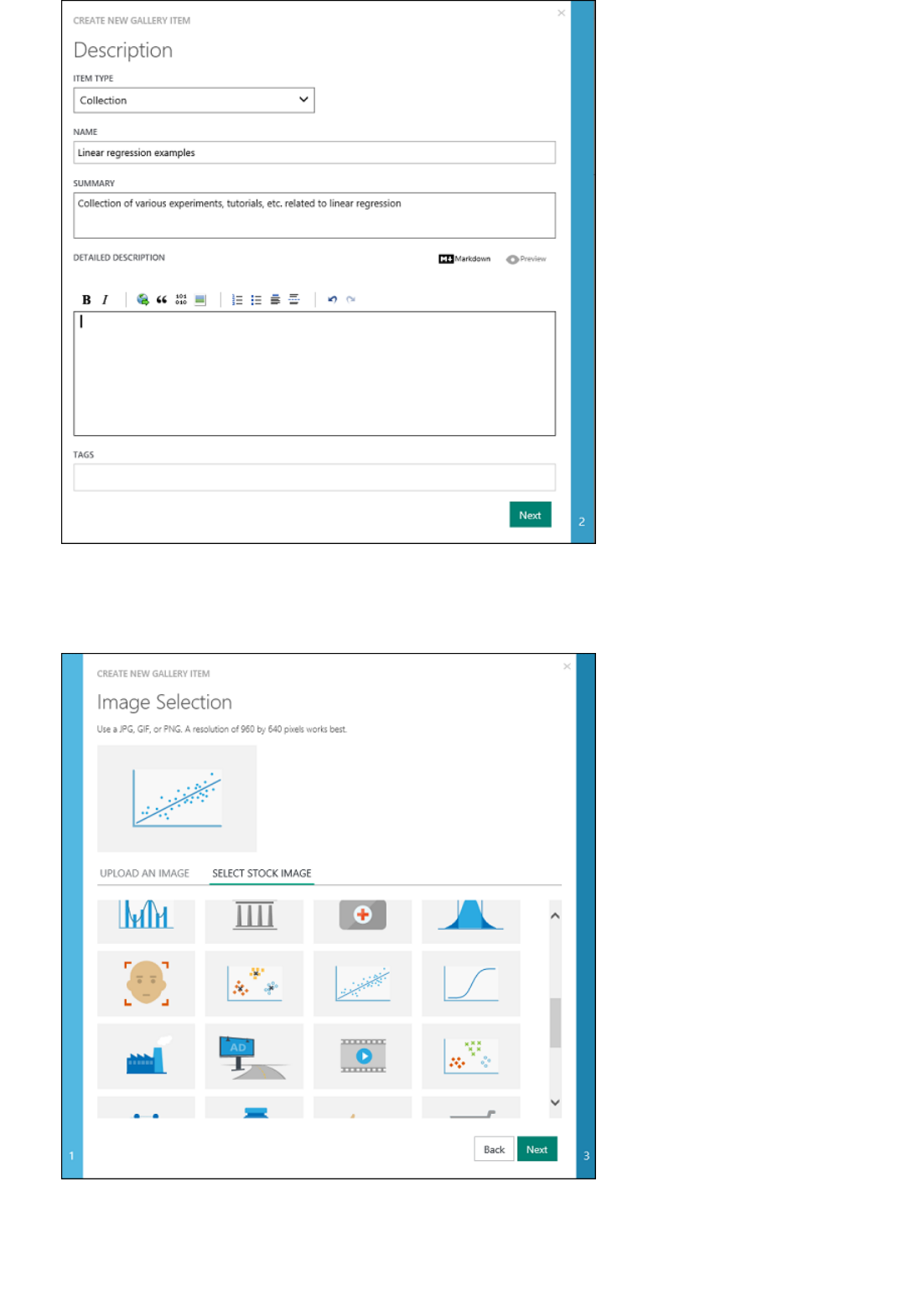

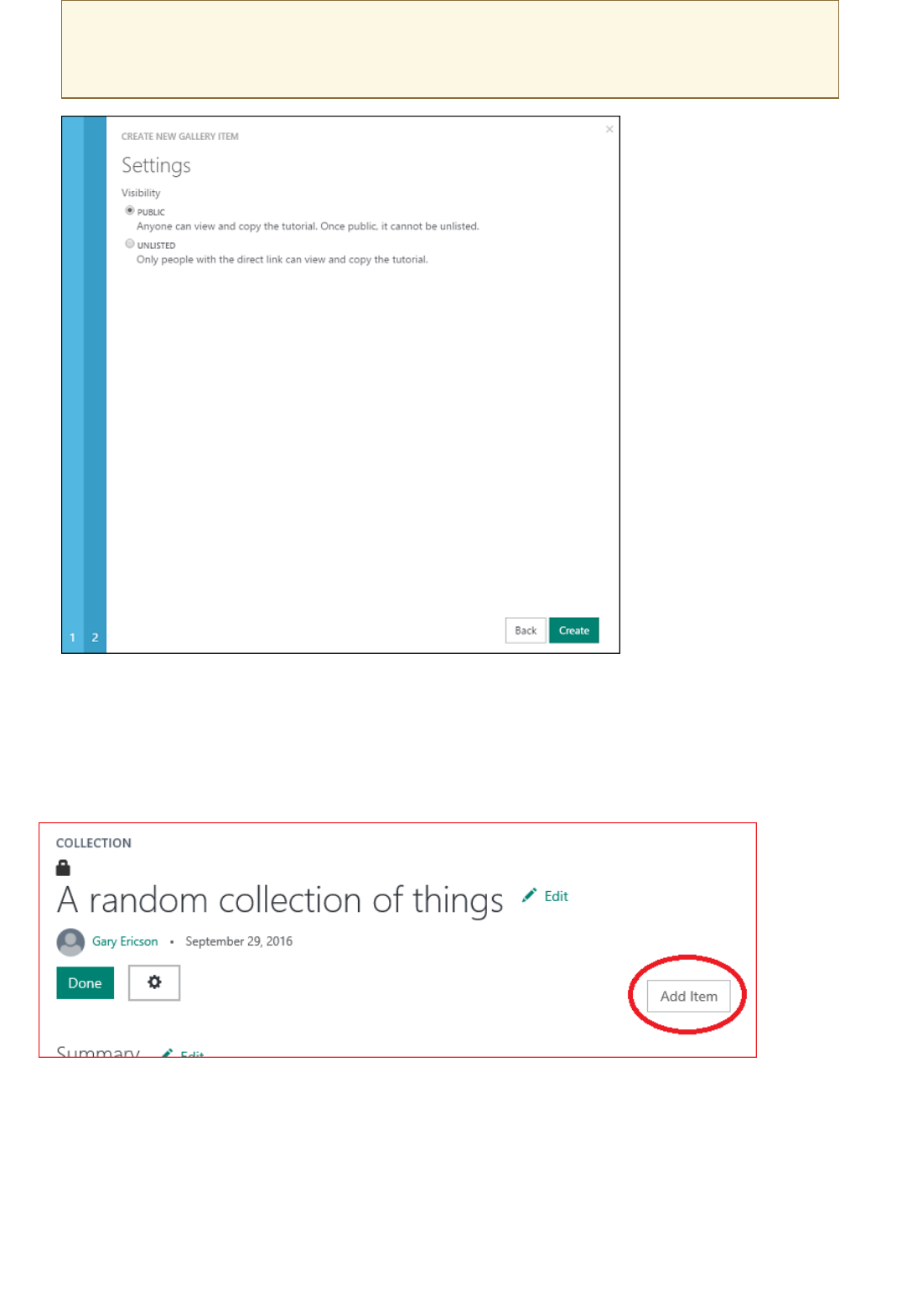

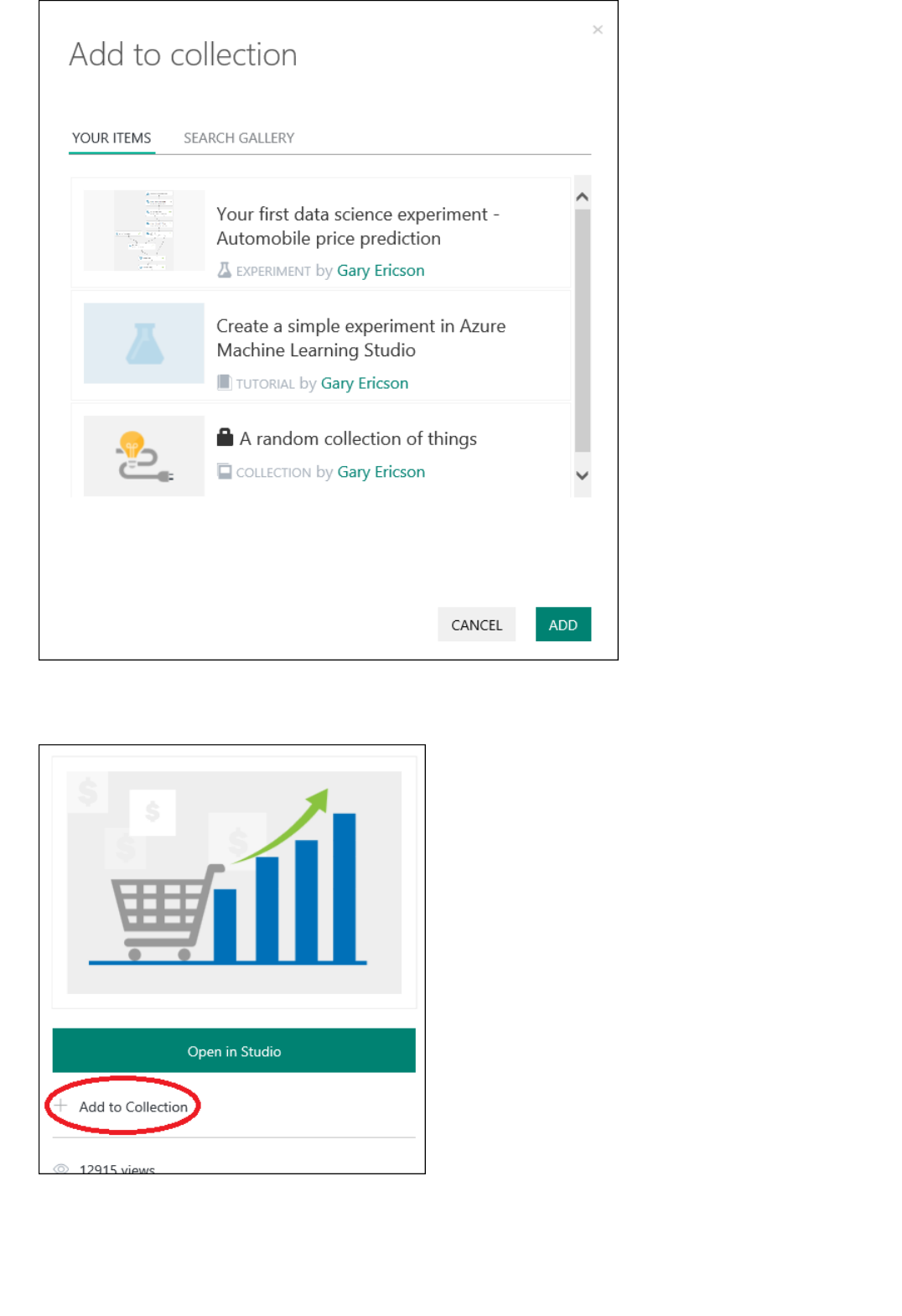

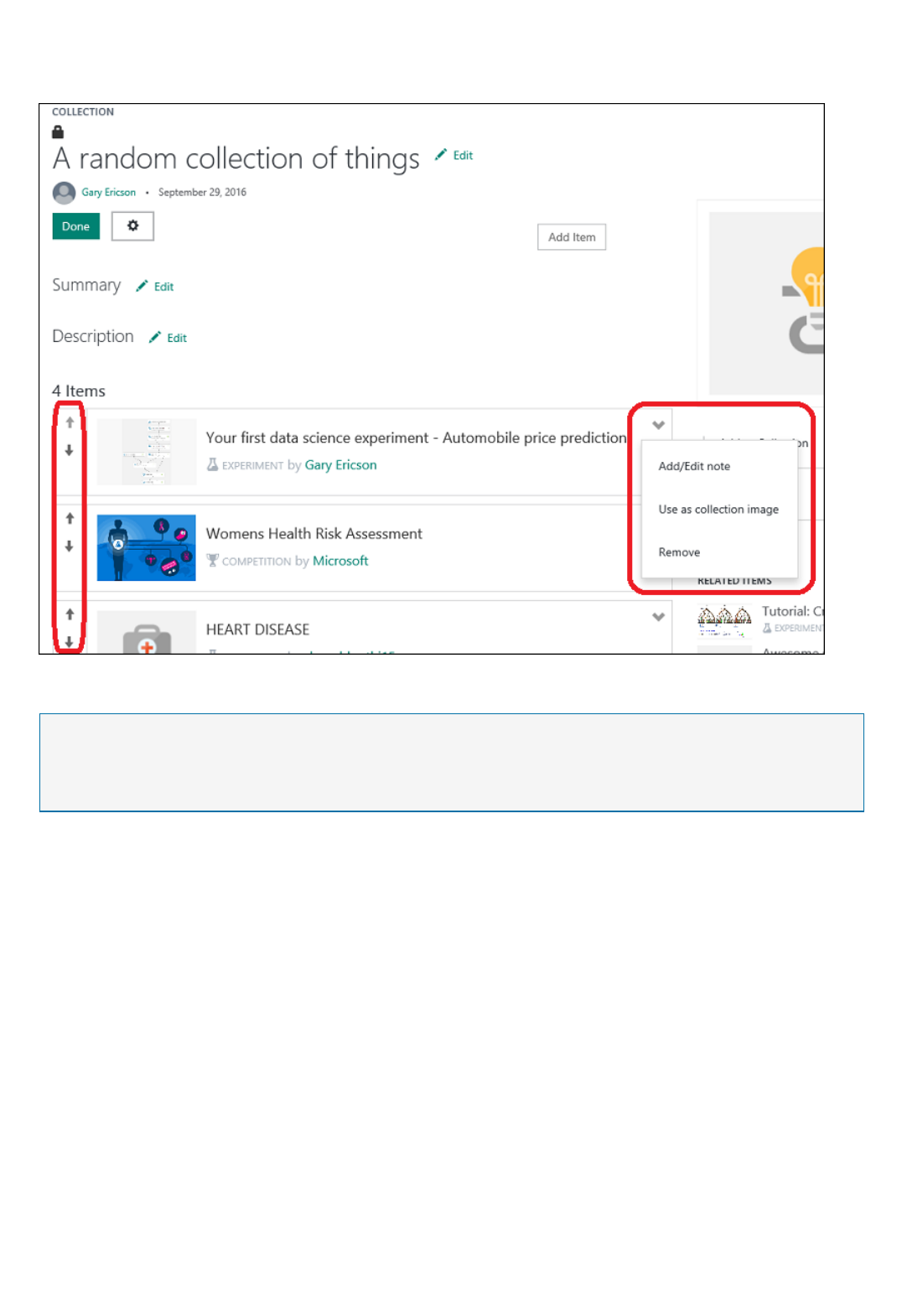

Collections

Custom Modules

Resources

Azure Roadmap

What is Azure Machine Learning Studio?

4/9/2018 • 9 min to read • Edit Online

NOTENOTE

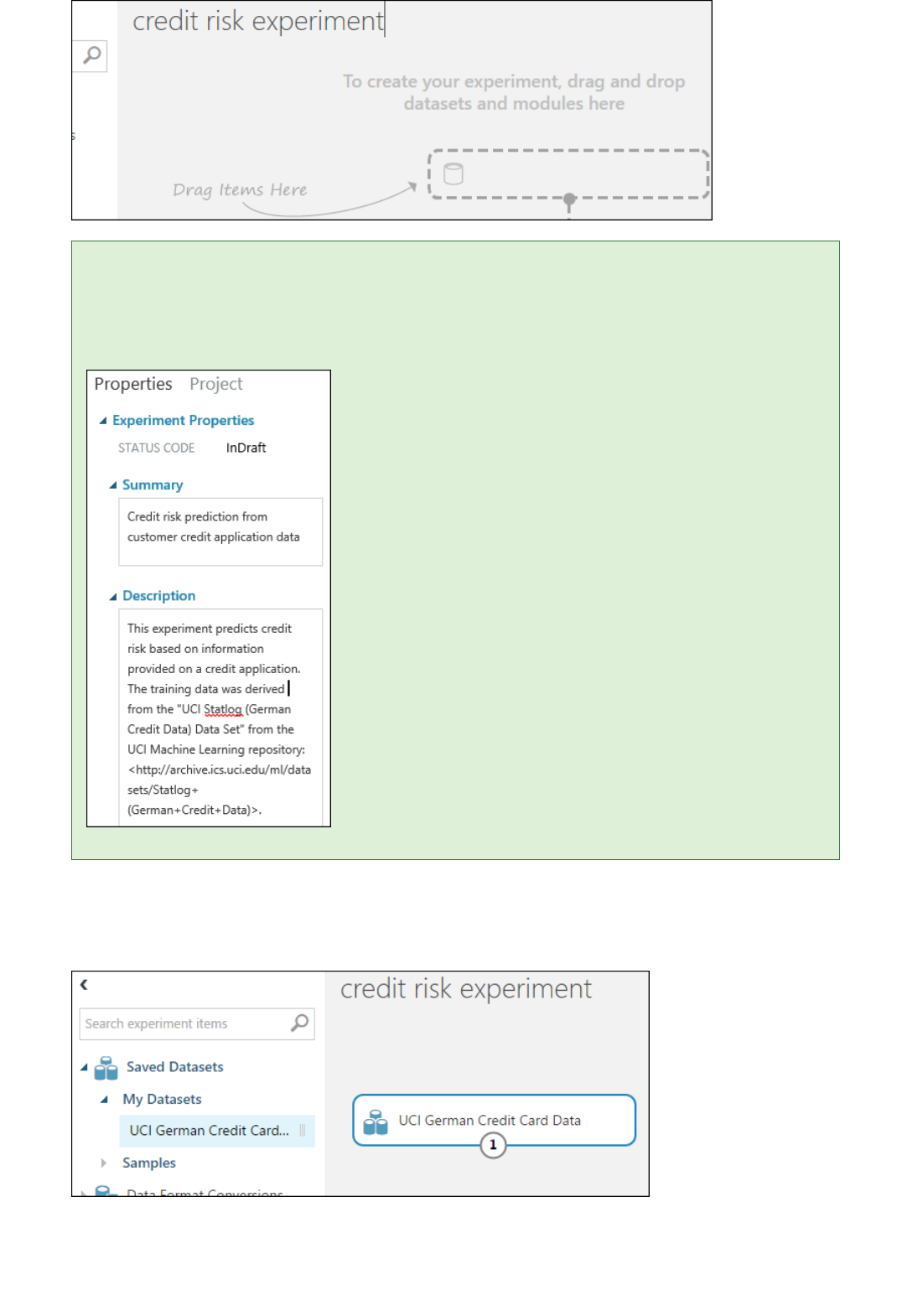

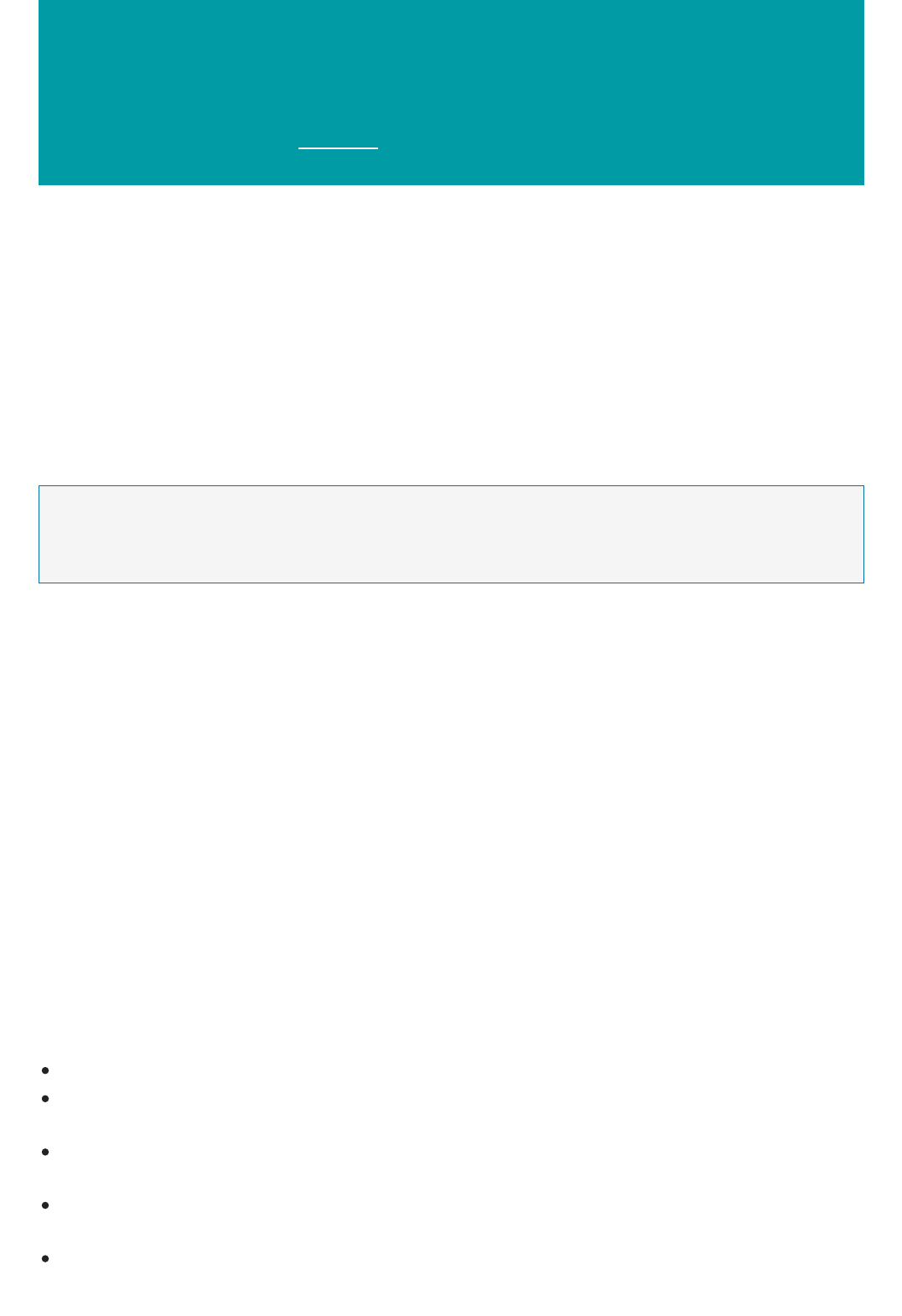

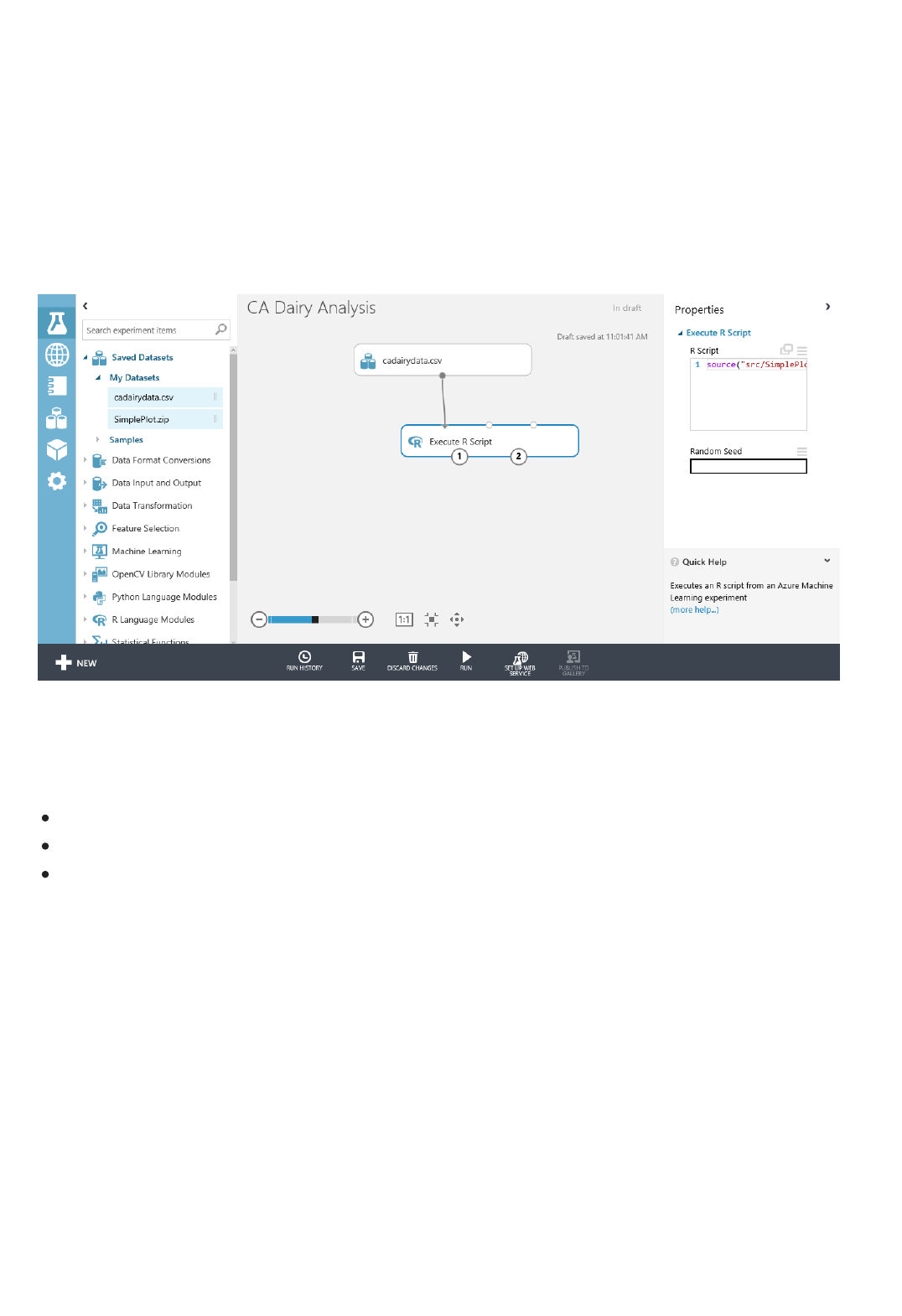

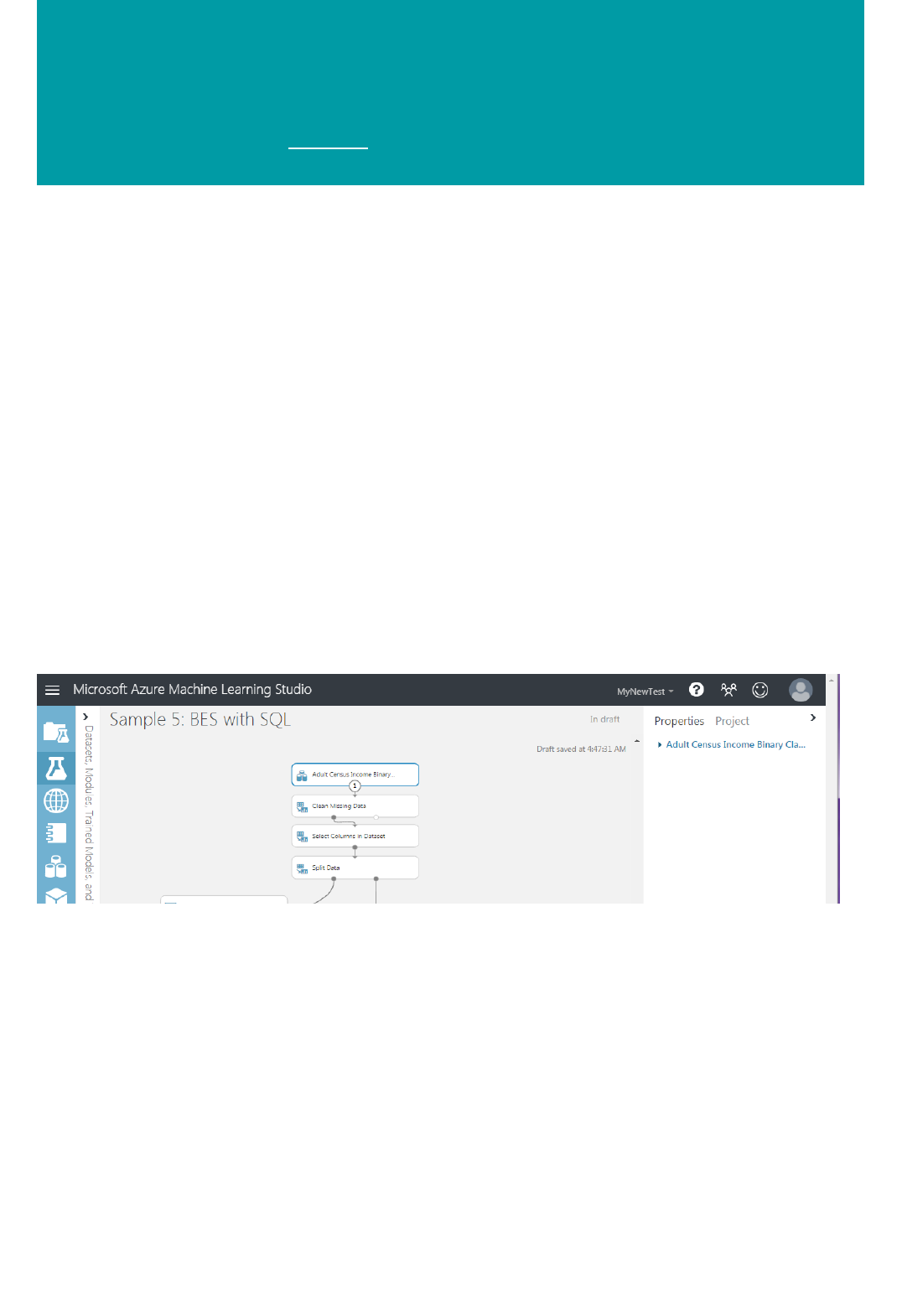

The Machine Learning Studio interactive workspace

TIPTIP

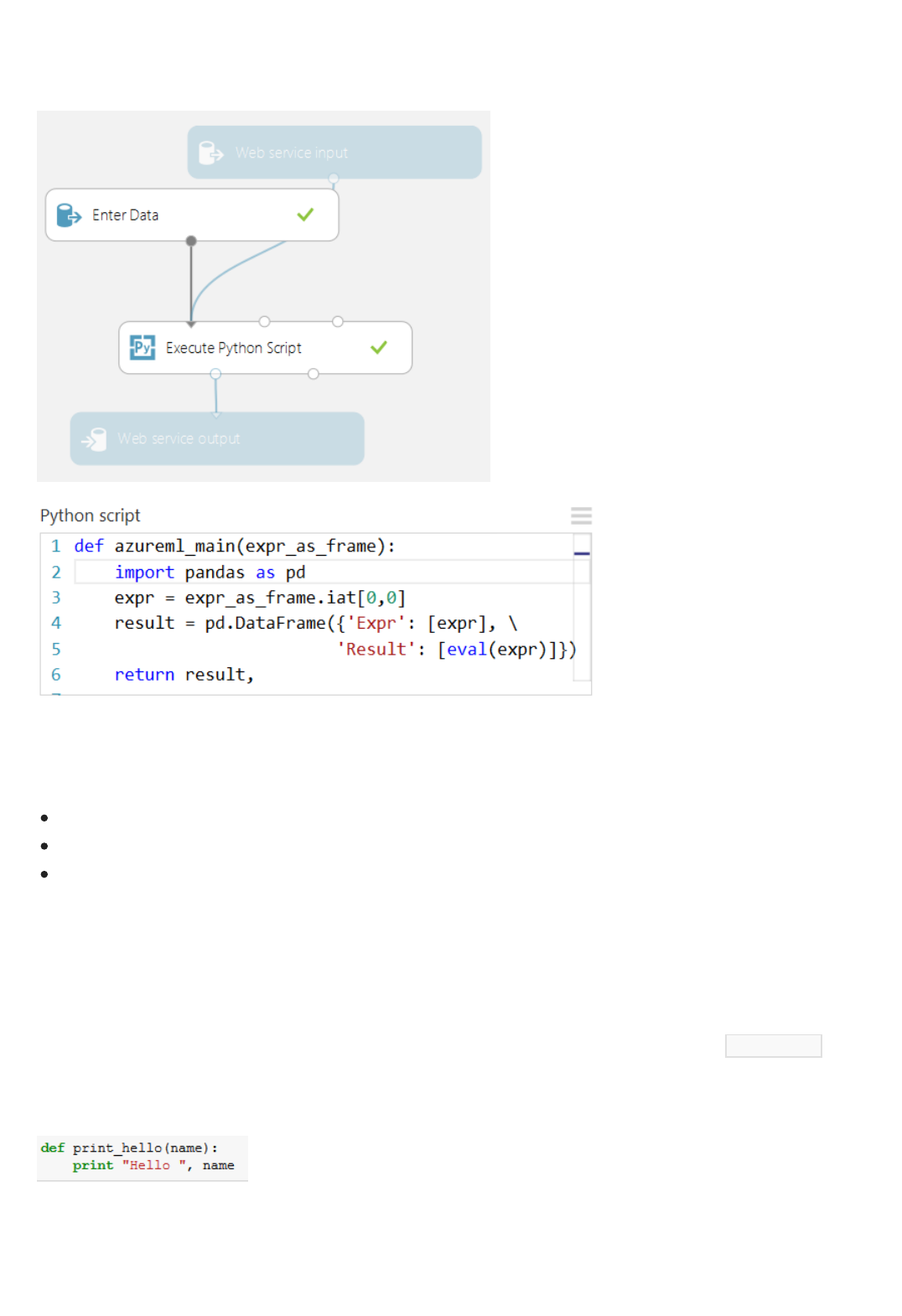

Microsoft Azure Machine Learning Studio is a collaborative, drag-and-drop tool you can use to build, test, and

deploy predictive analytics solutions on your data. Machine Learning Studio publishes models as web services that

can easily be consumed by custom apps or BI tools such as Excel.

Machine Learning Studio is where data science, predictive analytics, cloud resources, and your data meet.

You can try Azure Machine Learning for free. No credit card or Azure subscription is required. Get started now.

To develop a predictive analysis model, you typically use data from one or more sources, transform and analyze

that data through various data manipulation and statistical functions, and generate a set of results. Developing a

model like this is an iterative process. As you modify the various functions and their parameters, your results

converge until you are satisfied that you have a trained, effective model.

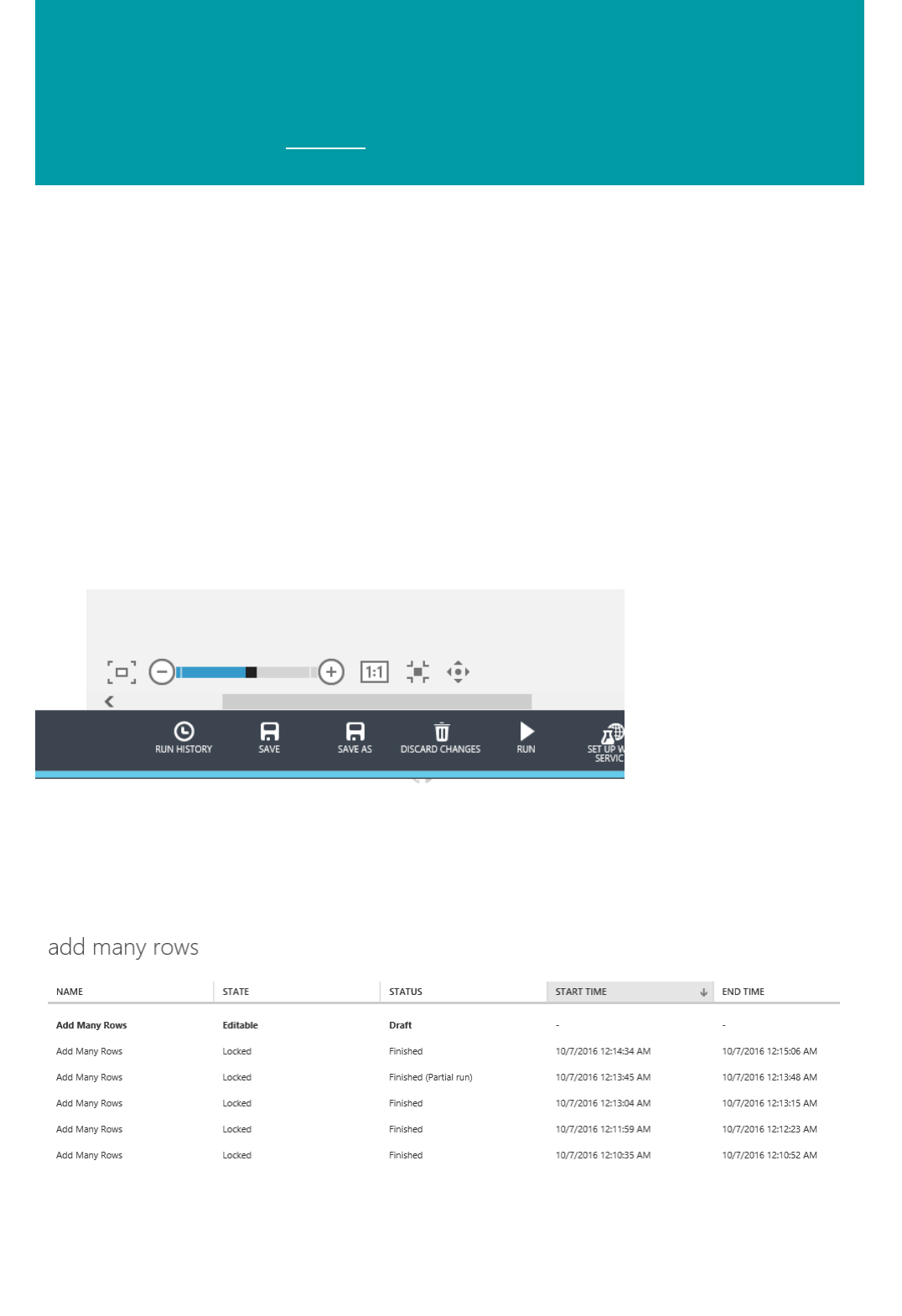

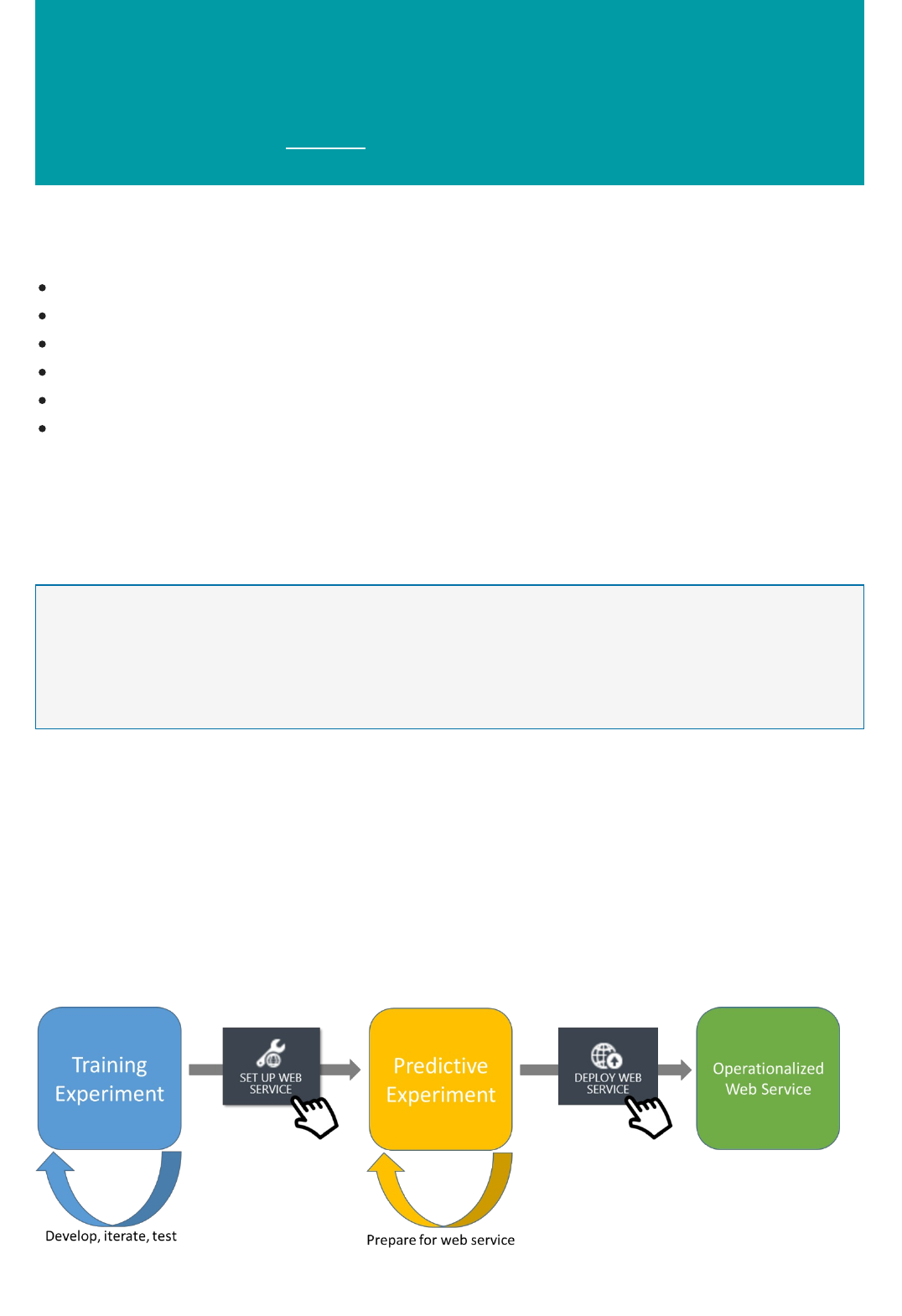

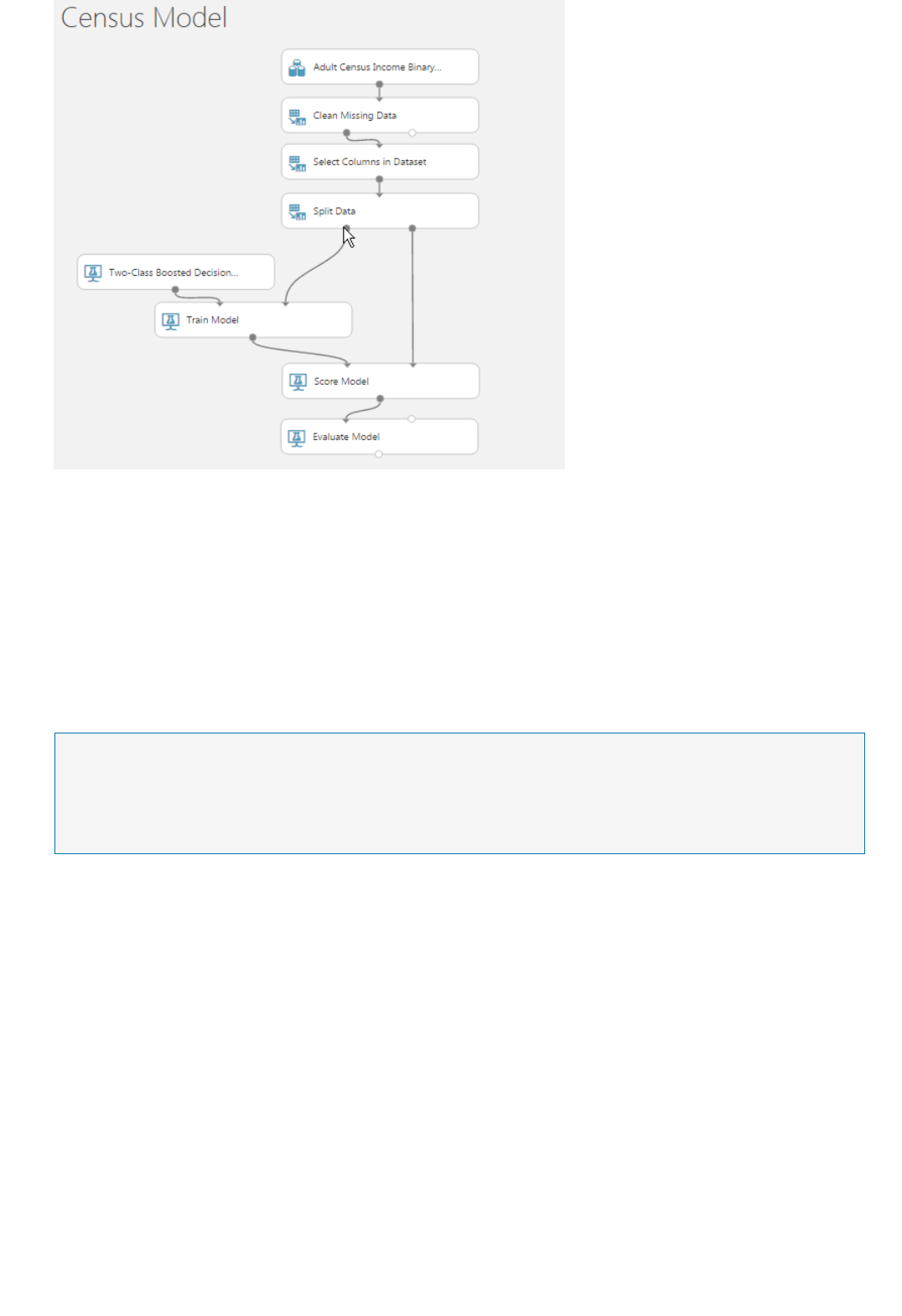

Azure Machine Learning Studio gives you an interactive, visual workspace to easily build, test, and iterate on a

predictive analysis model. You drag-and-drop datasets and analysis modules onto an interactive canvas,

connecting them together to form an experiment, which you run in Machine Learning Studio. To iterate on your

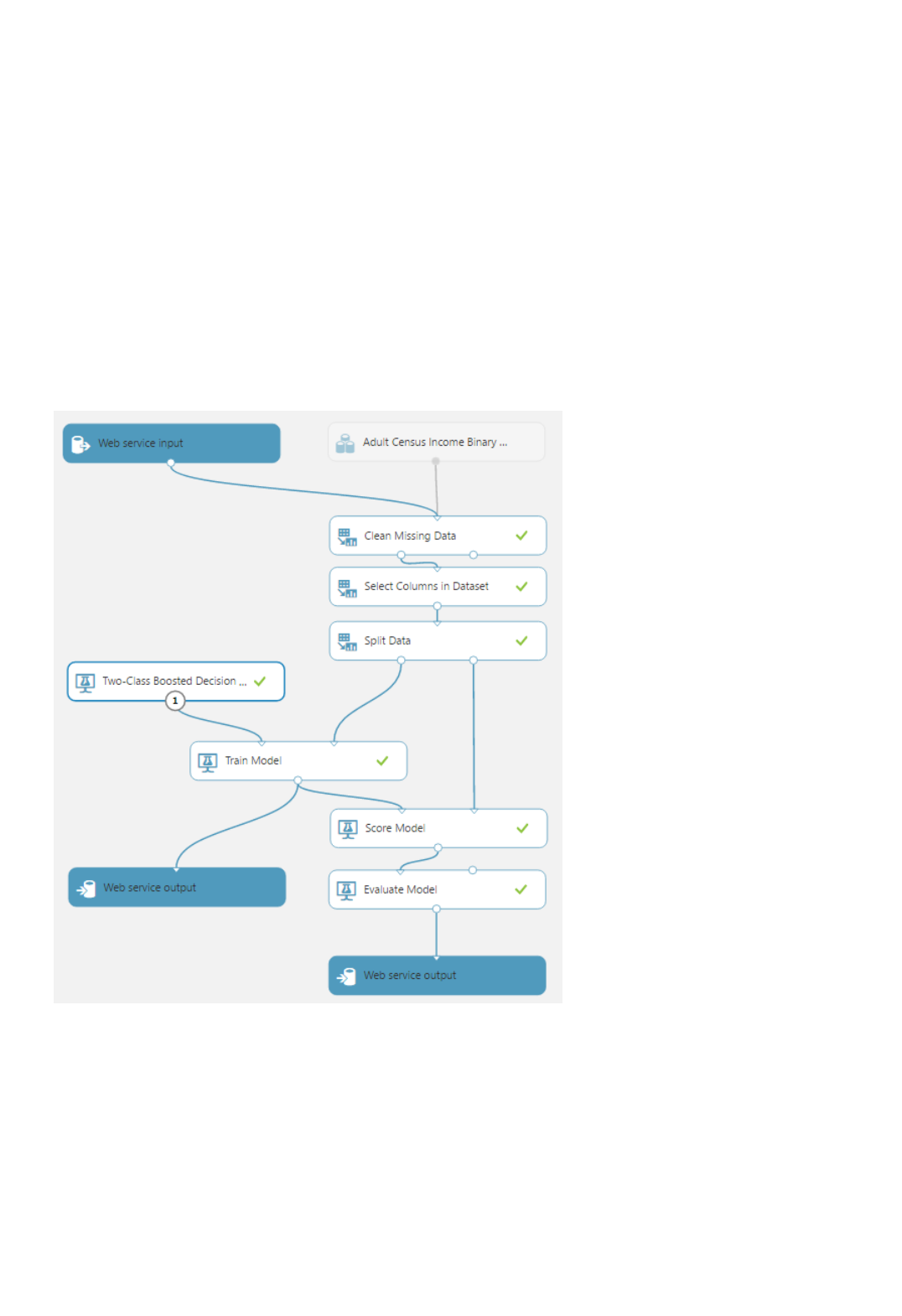

model design, you edit the experiment, save a copy if desired, and run it again. When you're ready, you can convert

your training experiment to a predictive experiment, and then publish it as a web service so that your model

can be accessed by others.

There is no programming required, just visually connecting datasets and modules to construct your predictive

analysis model.

To download and print a diagram that gives an overview of the capabilities of Machine Learning Studio, see Overview

diagram of Azure Machine Learning Studio capabilities.

Get started with Machine Learning Studio

Cortana IntelligenceCortana Intelligence

Azure Machine Learning StudioAzure Machine Learning Studio

GalleryGallery

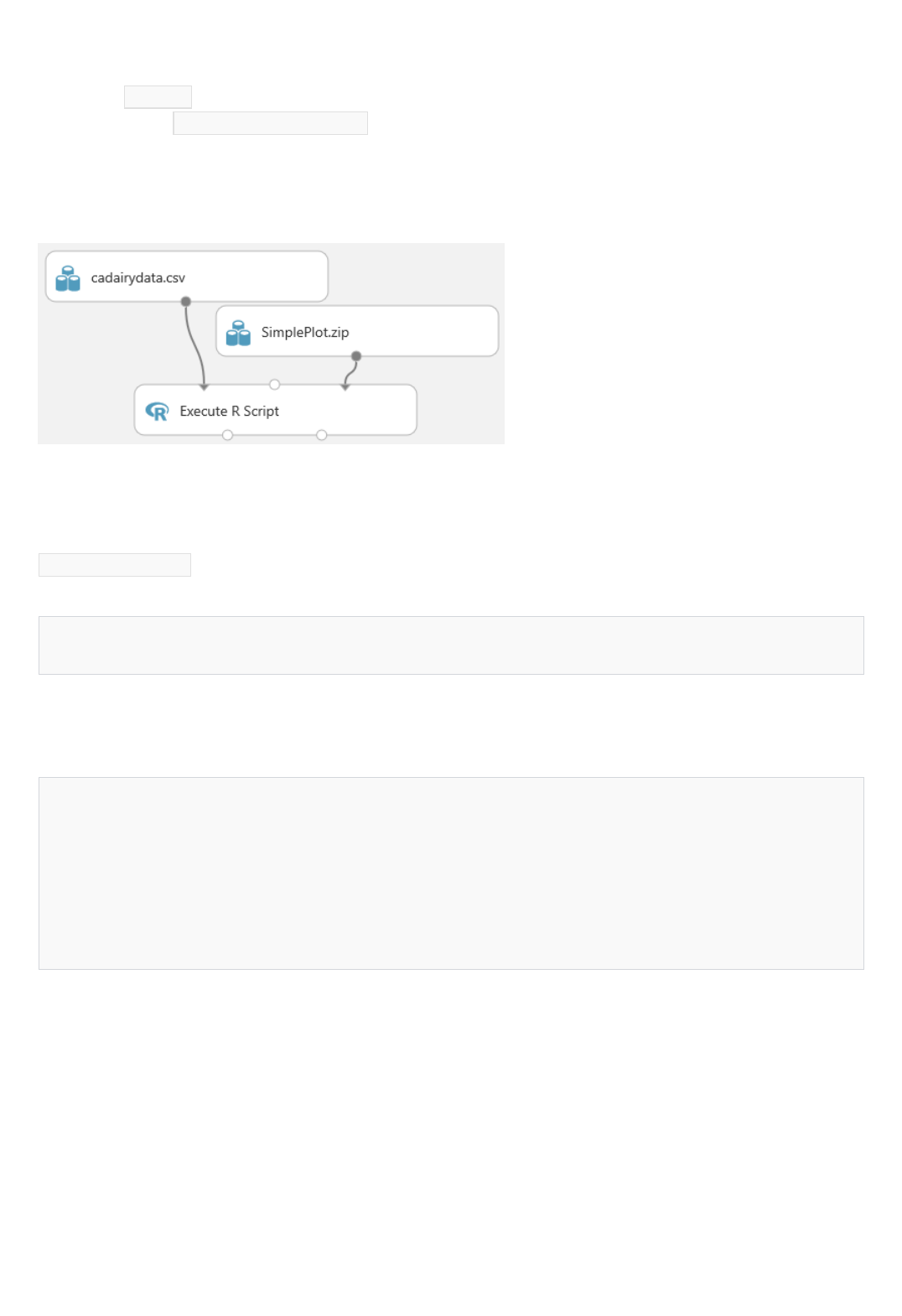

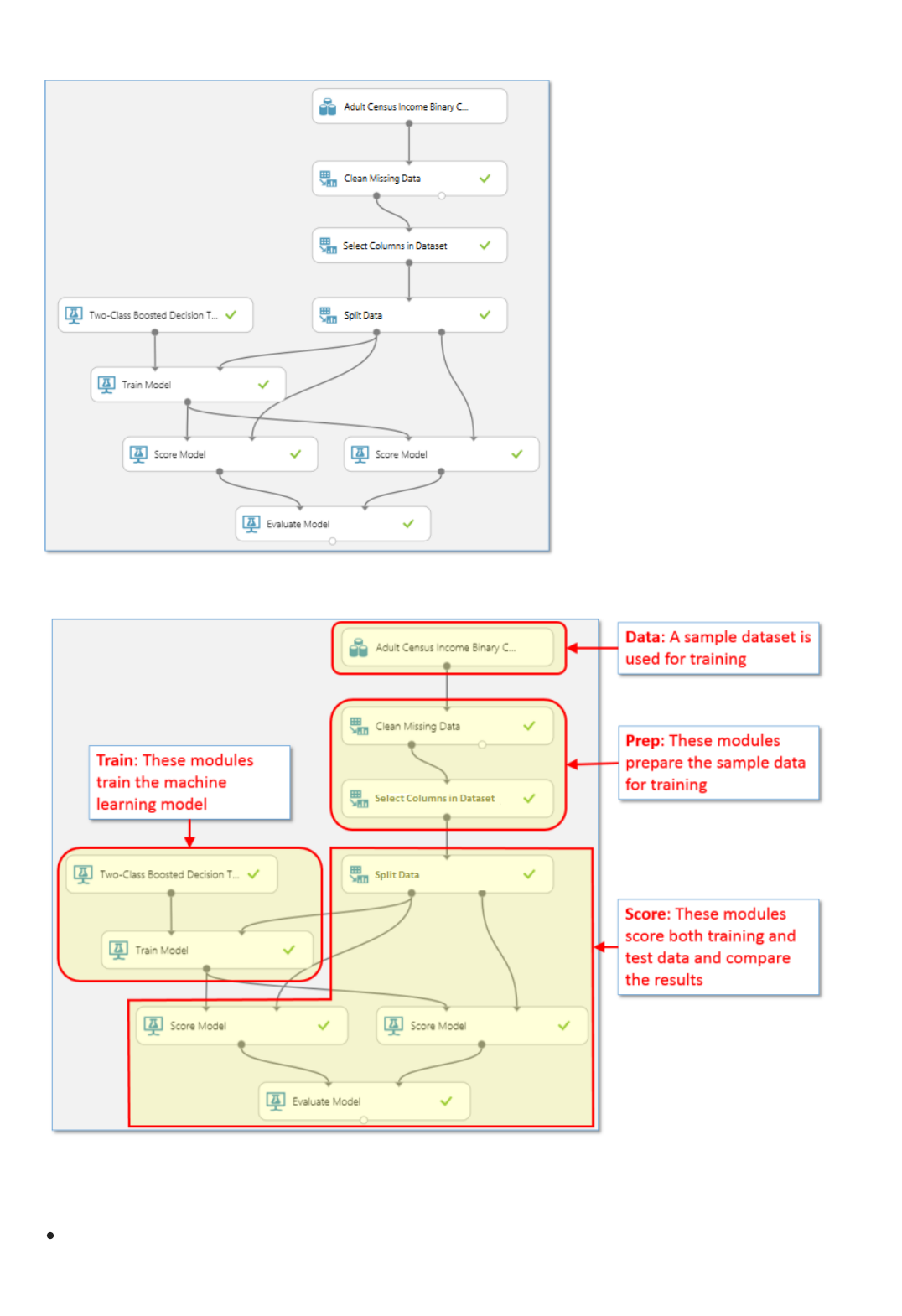

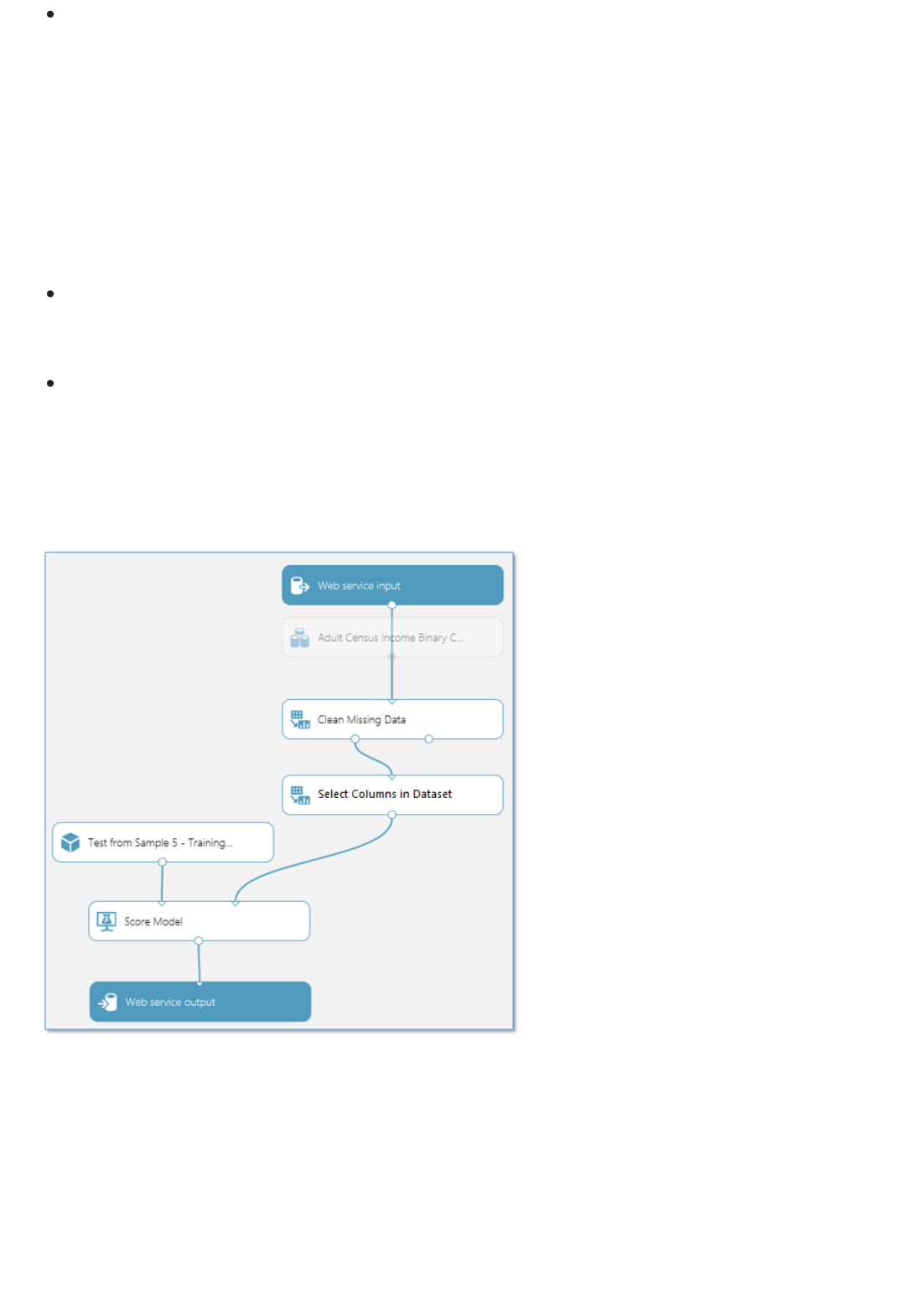

Components of an experiment

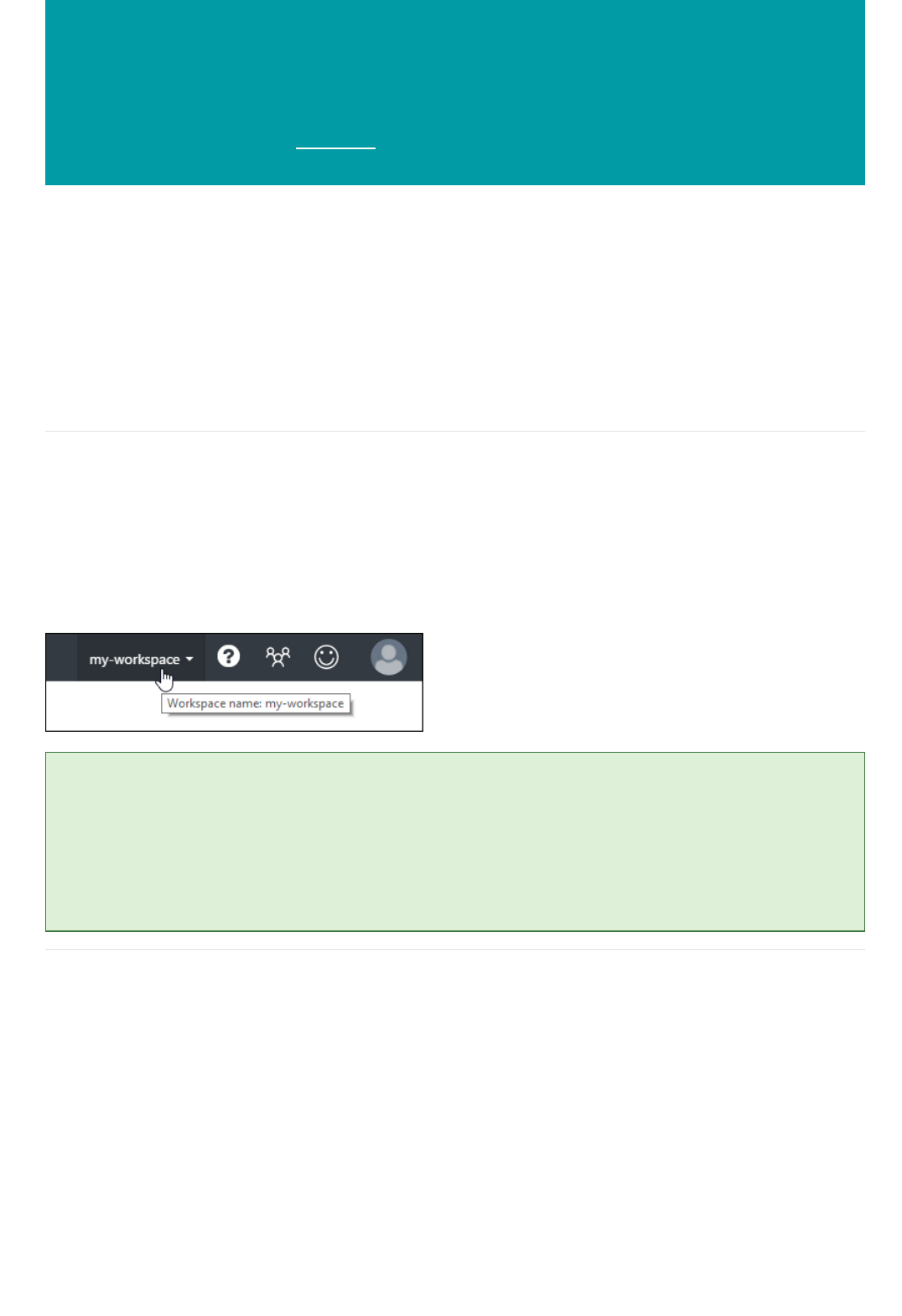

When you first enter Machine Learning Studio you see the Home page. From here you can view documentation,

videos, webinars, and find other valuable resources.

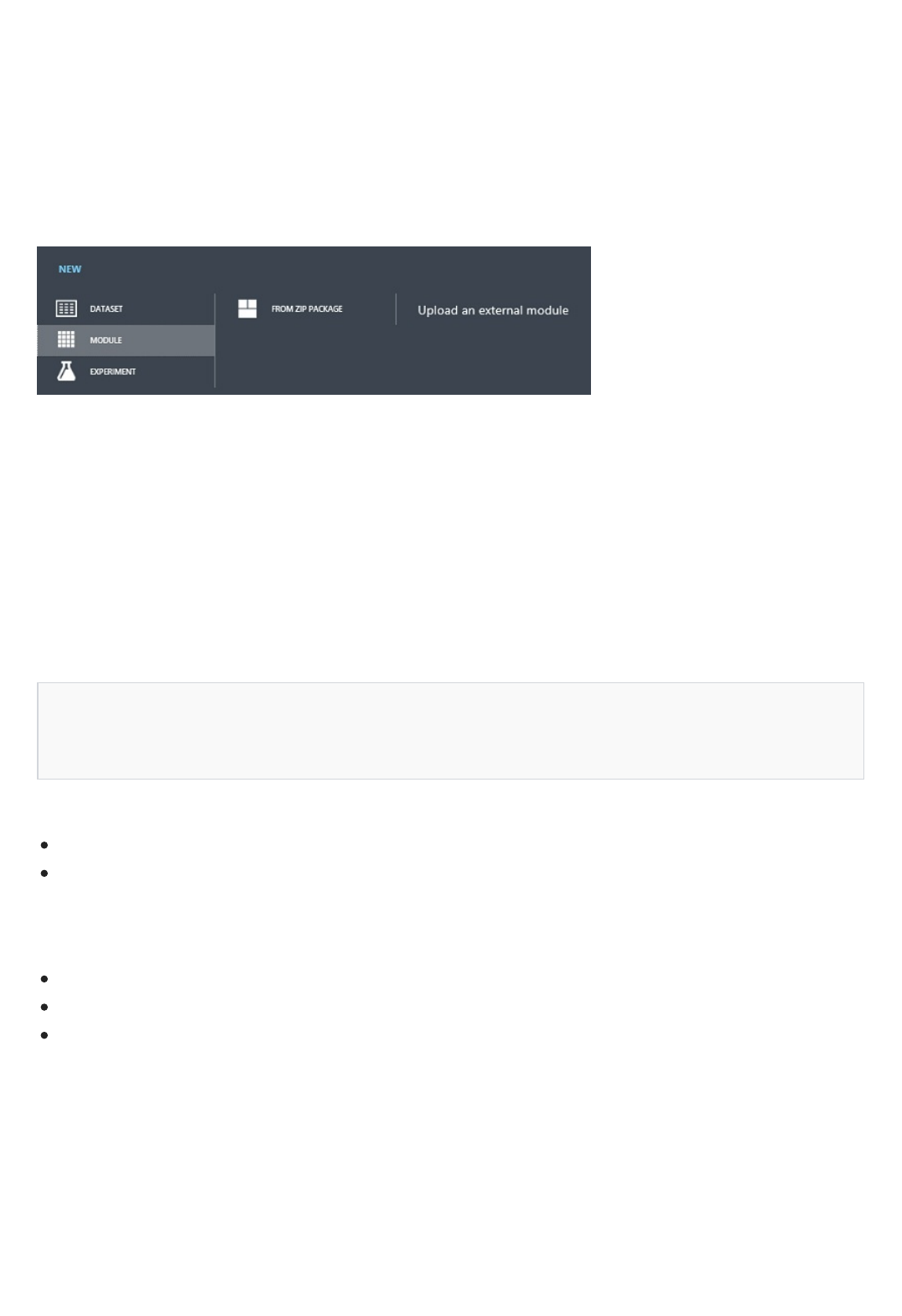

Click the upper-left menu and you'll see several options.

Click Cortana Intelligence and you'll be taken to the home page of the Cortana Intelligence Suite. The Cortana

Intelligence Suite is a fully managed big data and advanced analytics suite to transform your data into intelligent

action. See the Suite home page for full documentation, including customer stories.

There are two options here, Home, the page where you started, and Studio.

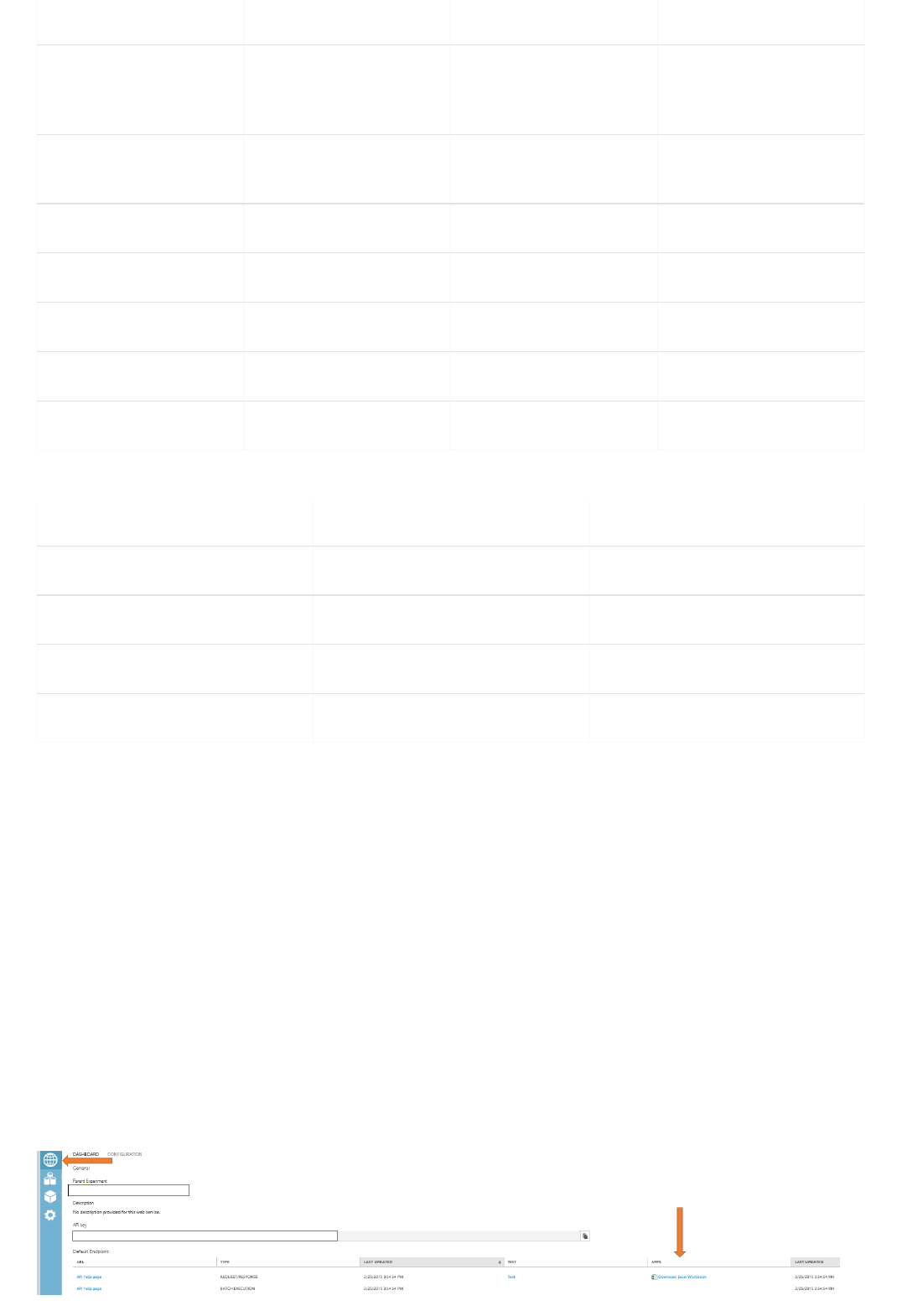

Click Studio and you'll be taken to the Azure Machine Learning Studio. First you'll be asked to sign in using

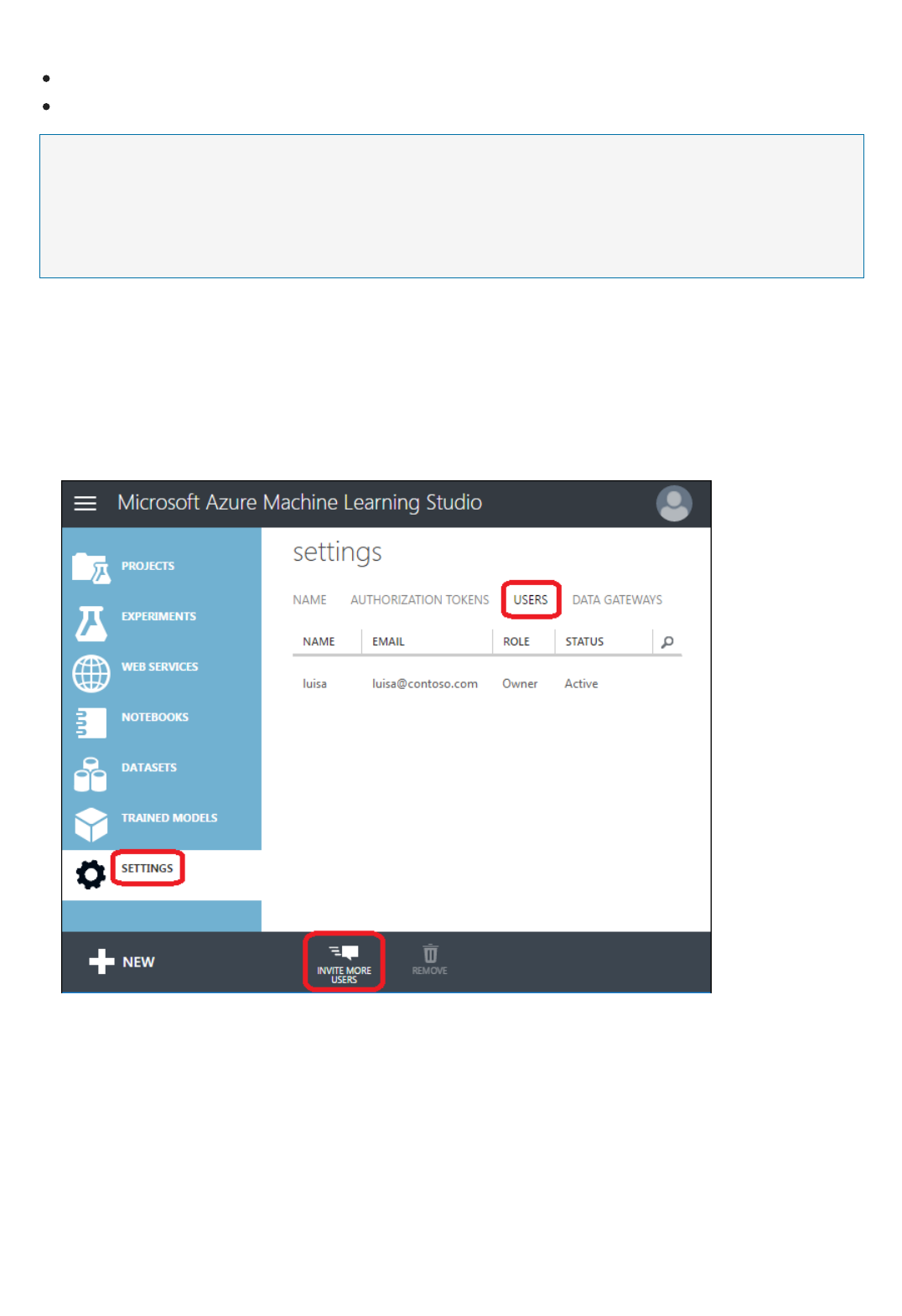

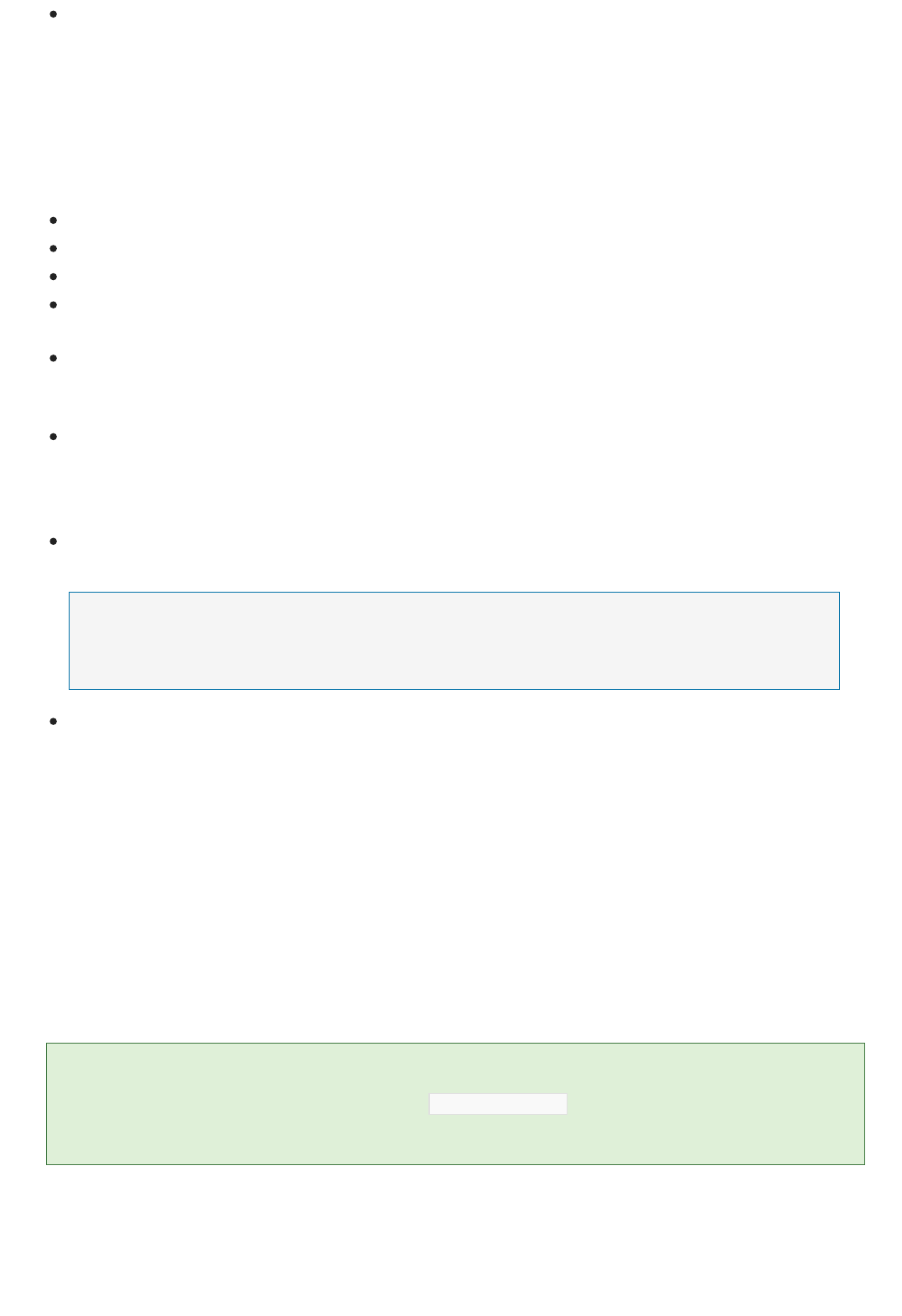

your Microsoft account, or your work or school account. Once signed in, you'll see the following tabs on the left:

PROJECTS - Collections of experiments, datasets, notebooks, and other resources representing a single project

EXPERIMENTS - Experiments that you have created and run or saved as drafts

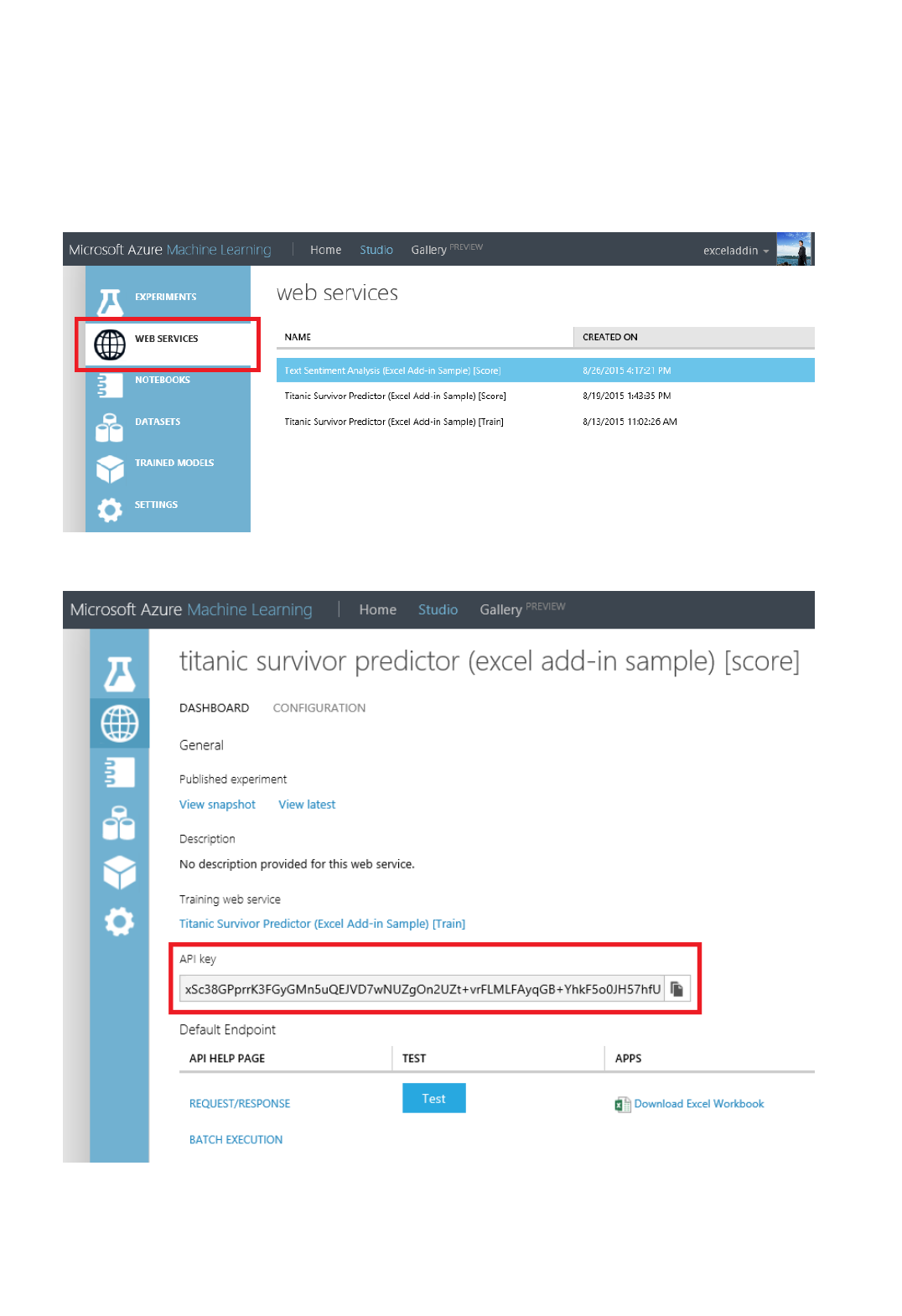

WEB SERVICES - Web services that you have deployed from your experiments

NOTEBOOKS - Jupyter notebooks that you have created

DATASETS - Datasets that you have uploaded into Studio

TRAINED MODELS - Models that you have trained in experiments and saved in Studio

SETTINGS - A collection of settings that you can use to configure your account and resources.

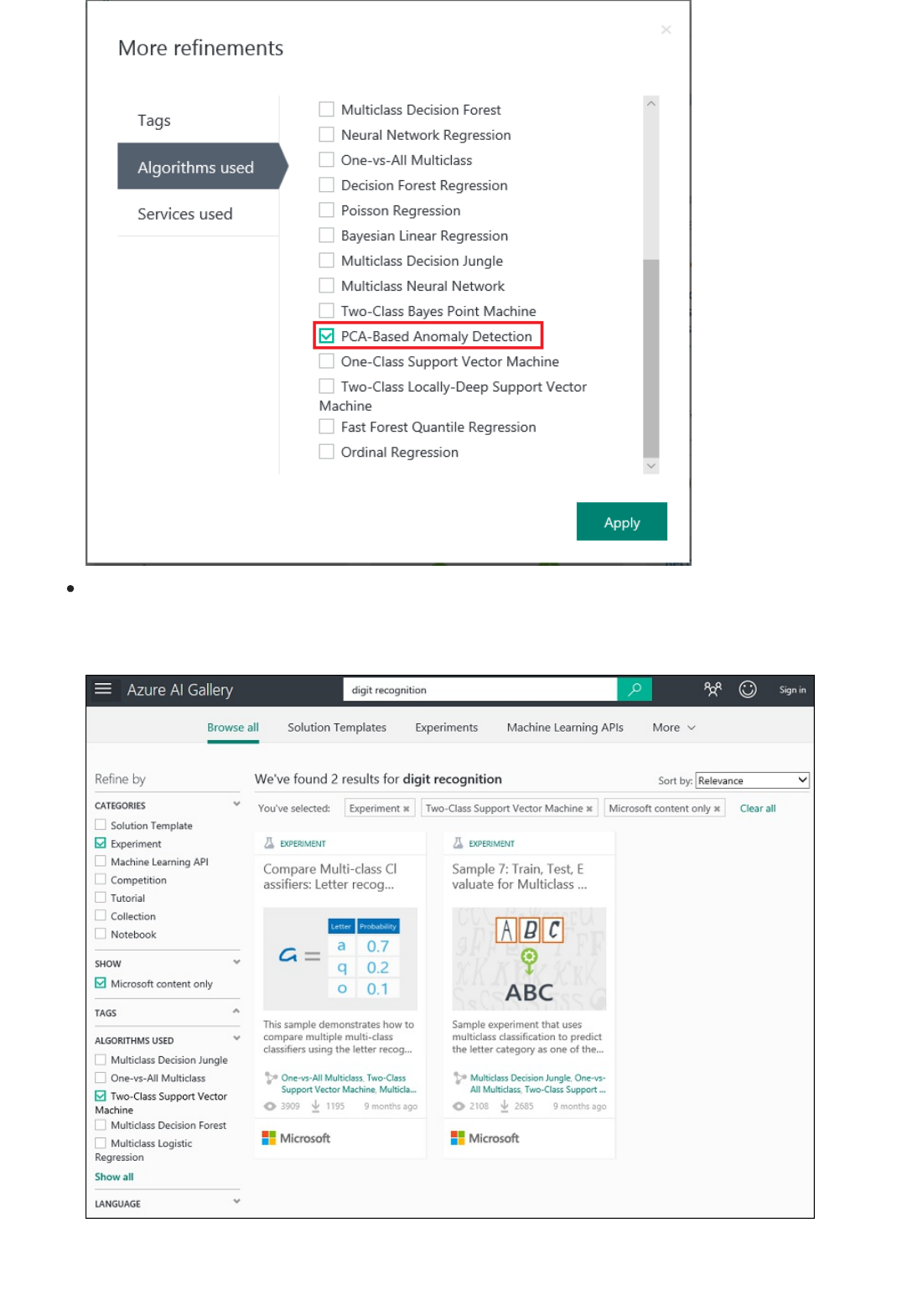

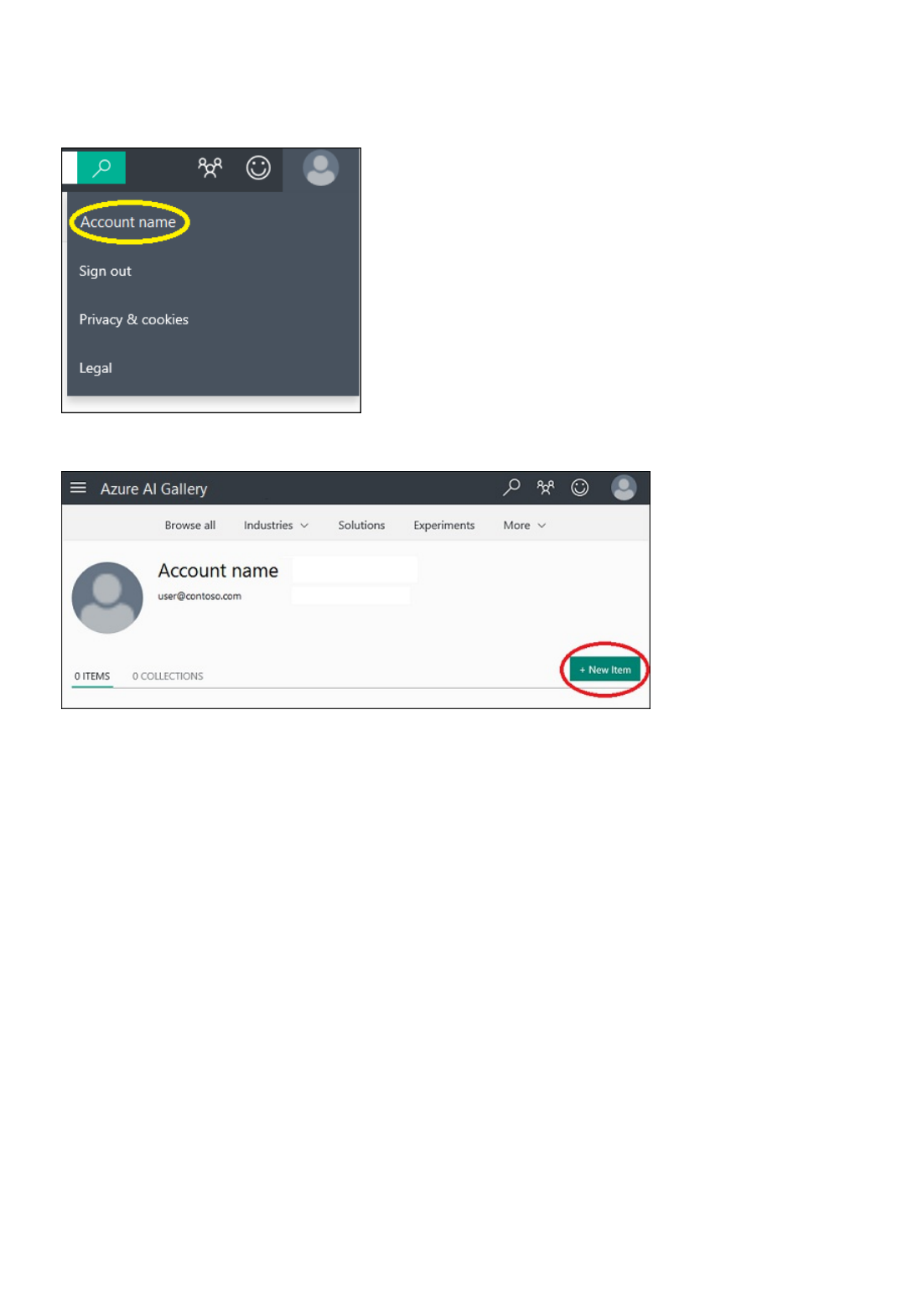

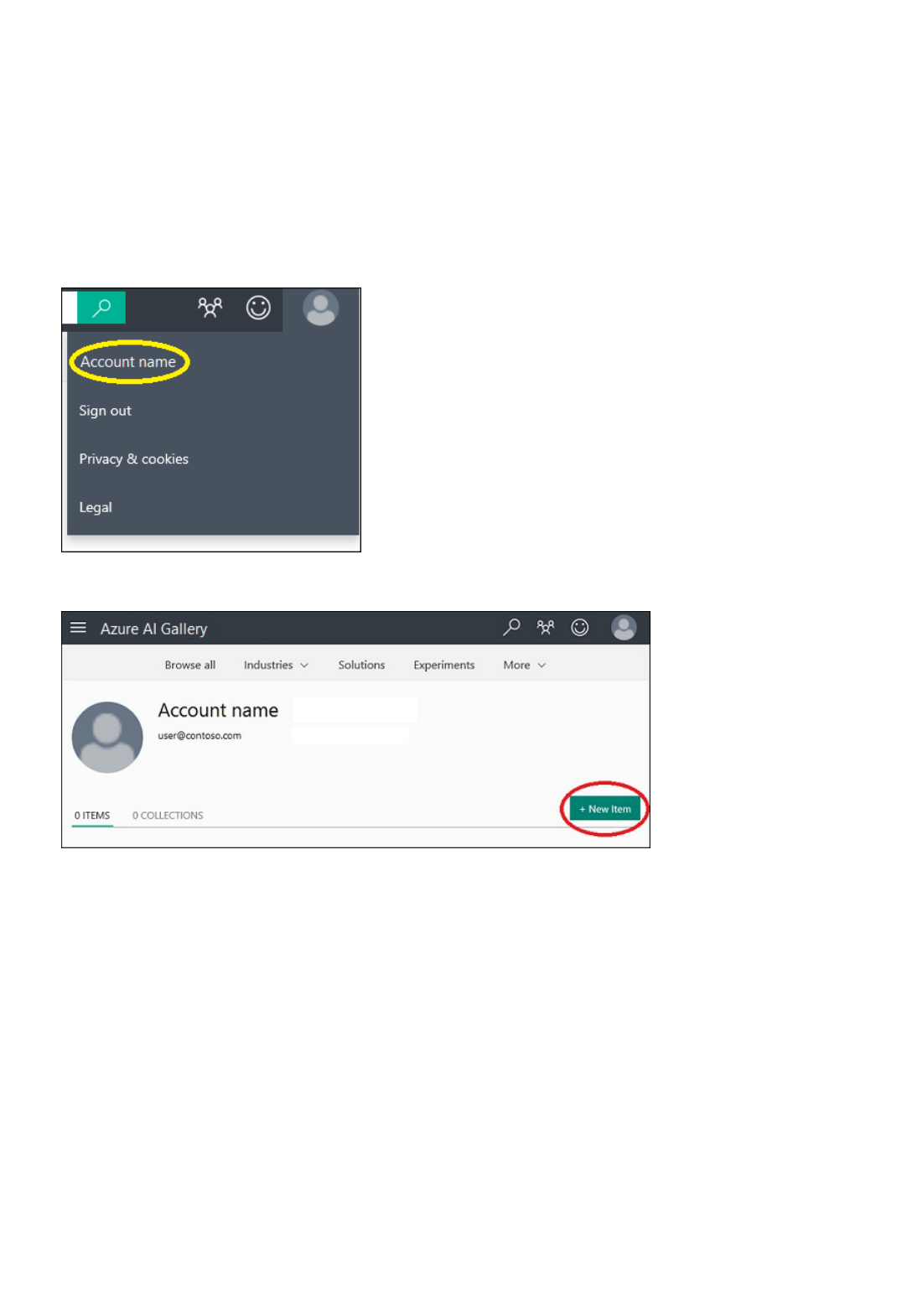

Click Gallery and you'll be taken to the Azure AI Gallery. The Gallery is a place where a community of data

scientists and developers share solutions created using components of the Cortana Intelligence Suite.

For more information about the Gallery, see Share and discover solutions in the Azure AI Gallery.

DatasetsDatasets

ModulesModules

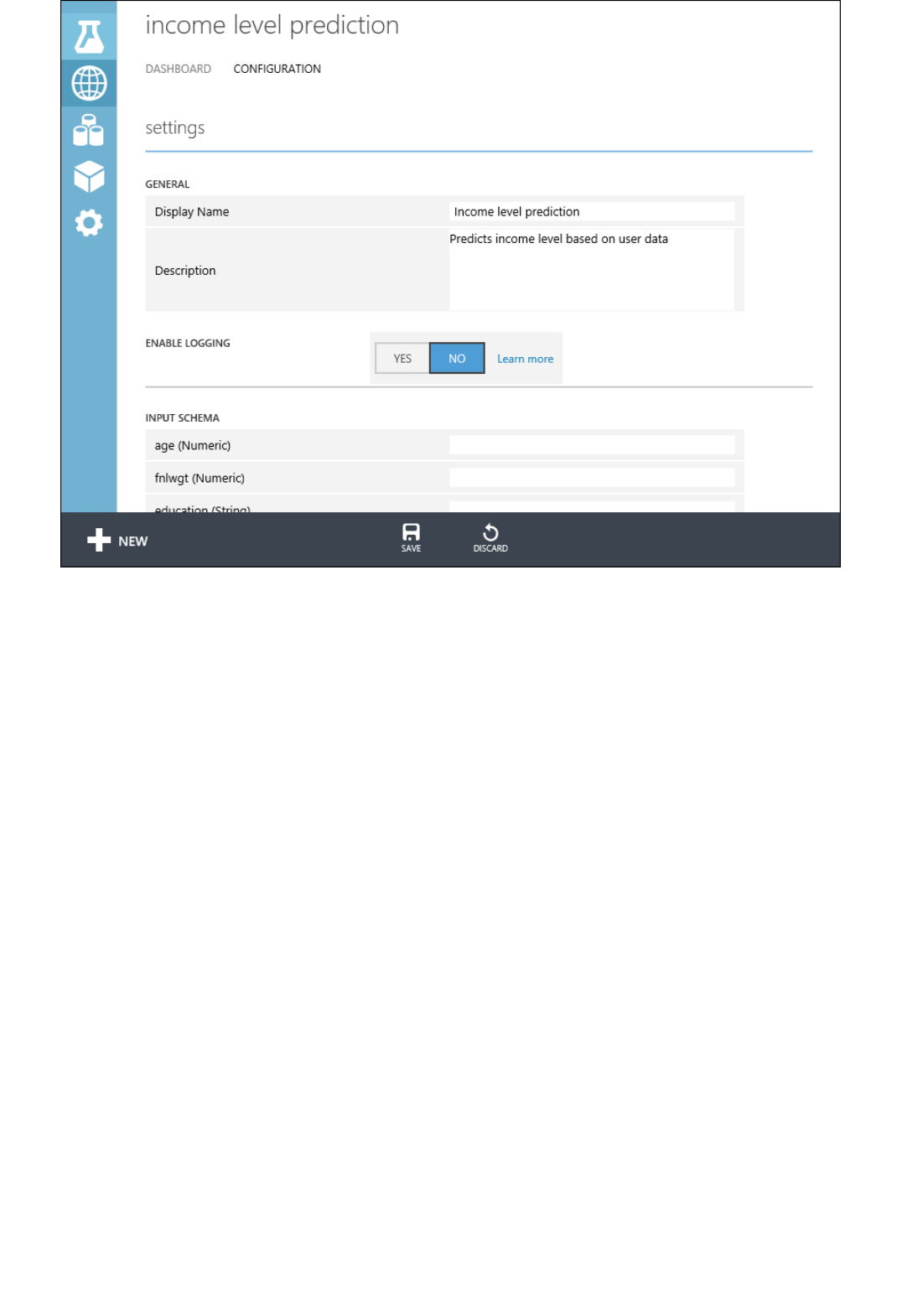

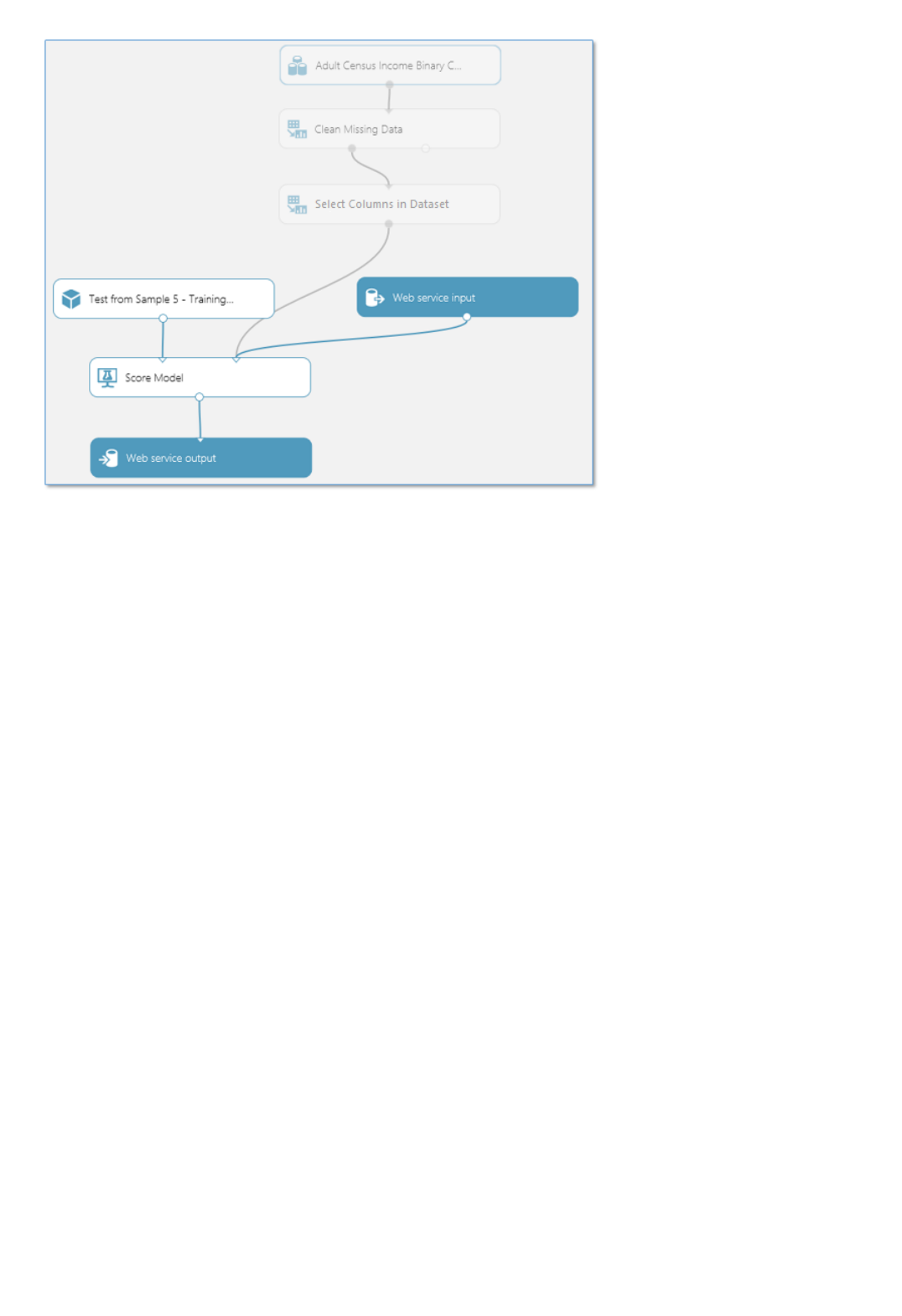

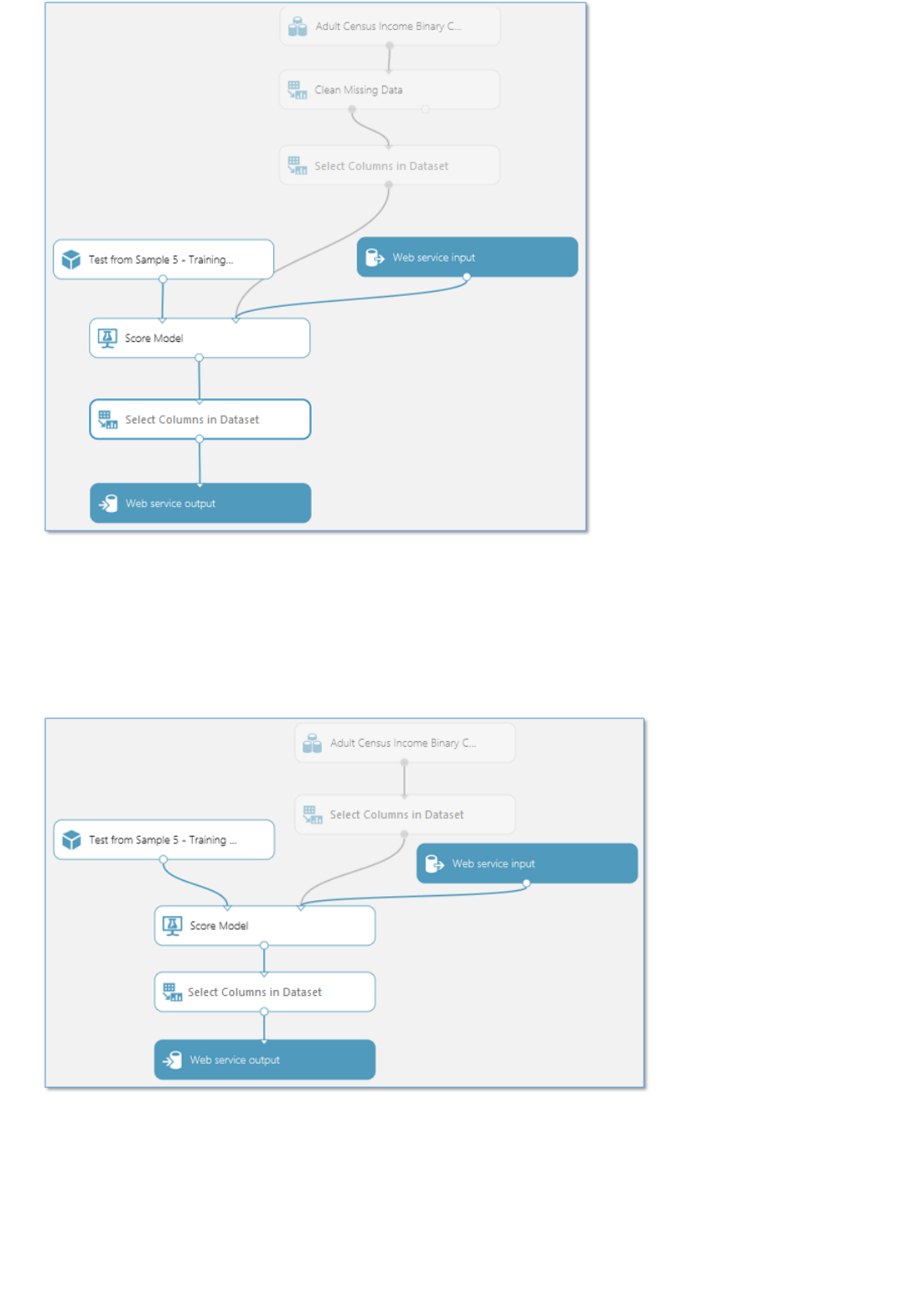

Deploying a predictive analytics web service

An experiment consists of datasets that provide data to analytical modules, which you connect together to

construct a predictive analysis model. Specifically, a valid experiment has these characteristics:

The experiment has at least one dataset and one module

Datasets may be connected only to modules

Modules may be connected to either datasets or other modules

All input ports for modules must have some connection to the data flow

All required parameters for each module must be set

You can create an experiment from scratch, or you can use an existing sample experiment as a template. For more

information, see Copy example experiments to create new machine learning experiments.

For an example of creating a simple experiment, see Create a simple experiment in Azure Machine Learning

Studio.

For a more complete walkthrough of creating a predictive analytics solution, see Develop a predictive solution with

Azure Machine Learning.

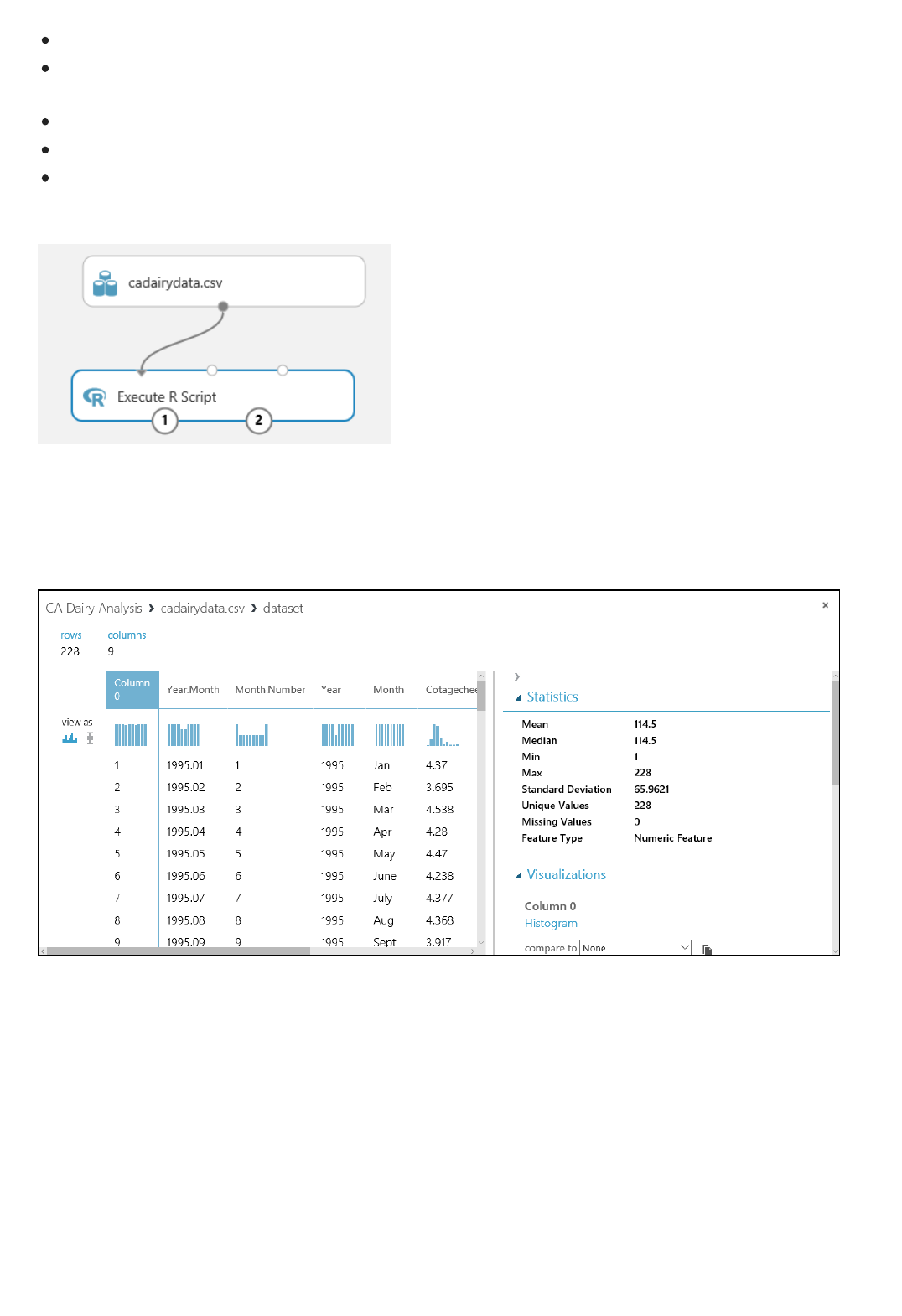

A dataset is data that has been uploaded to Machine Learning Studio so that it can be used in the modeling

process. A number of sample datasets are included with Machine Learning Studio for you to experiment with, and

you can upload more datasets as you need them. Here are some examples of included datasets:

MPG data for various automobiles - Miles per gallon (MPG) values for automobiles identified by number of

cylinders, horsepower, etc.

Breast cancer data - Breast cancer diagnosis data.

Forest fires data - Forest fire sizes in northeast Portugal.

As you build an experiment you can choose from the list of datasets available to the left of the canvas.

For a list of sample datasets included in Machine Learning Studio, see Use the sample data sets in Azure Machine

Learning Studio.

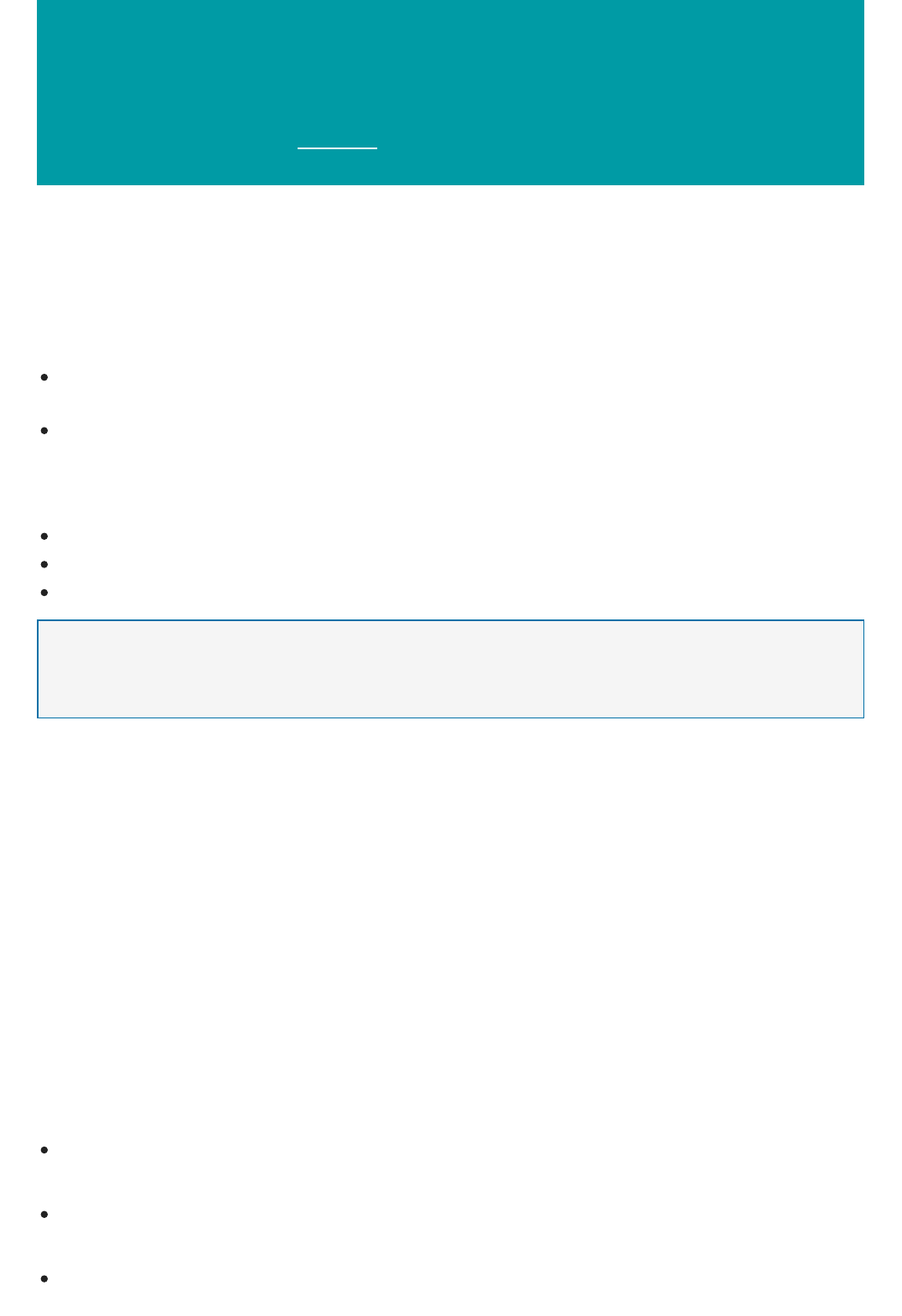

A module is an algorithm that you can perform on your data. Machine Learning Studio has a number of modules

ranging from data ingress functions to training, scoring, and validation processes. Here are some examples of

included modules:

Convert to ARFF - Converts a .NET serialized dataset to Attribute-Relation File Format (ARFF).

Compute Elementary Statistics - Calculates elementary statistics such as mean, standard deviation, etc.

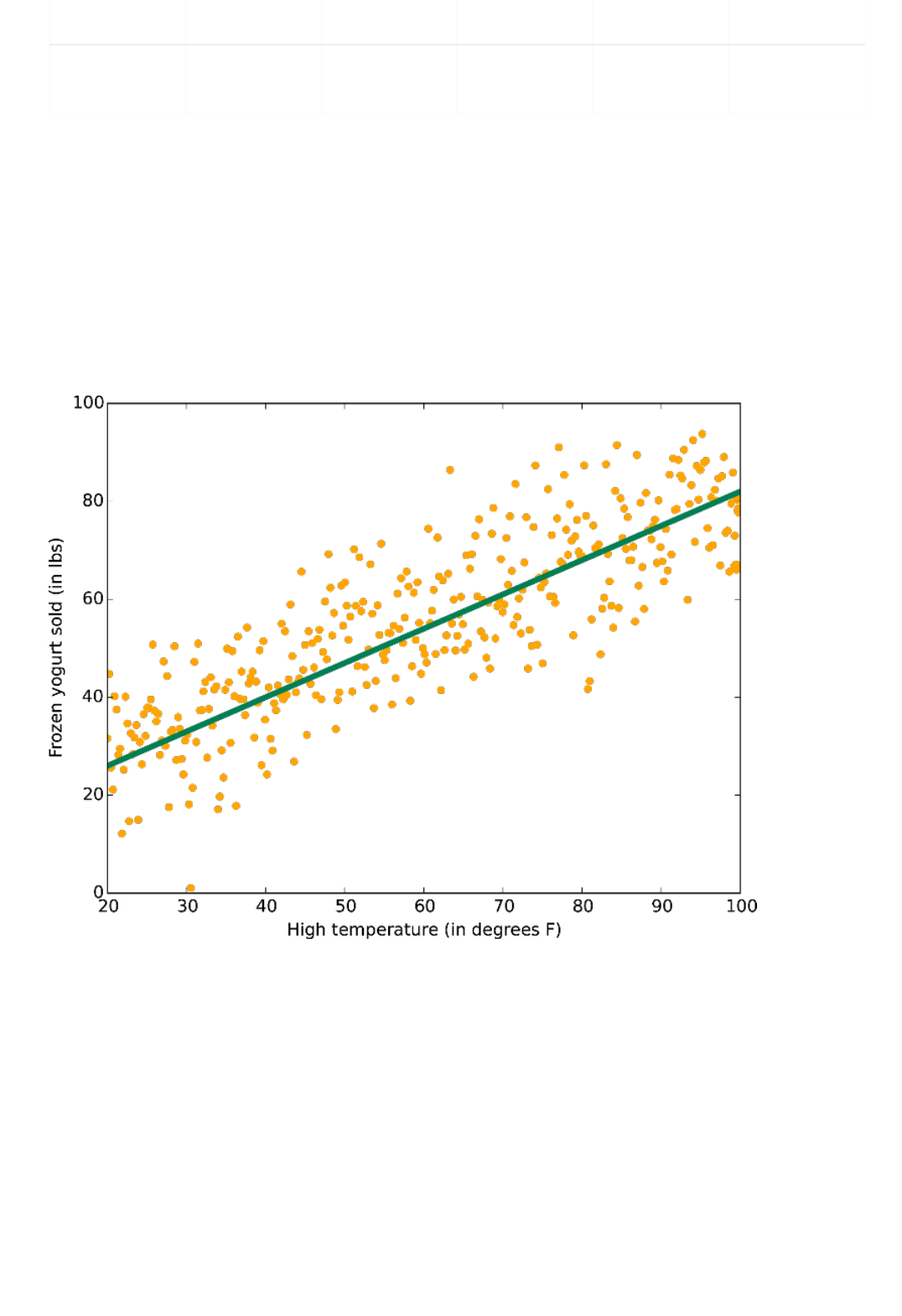

Linear Regression - Creates an online gradient descent-based linear regression model.

Score Model - Scores a trained classification or regression model.

As you build an experiment you can choose from the list of modules available to the left of the canvas.

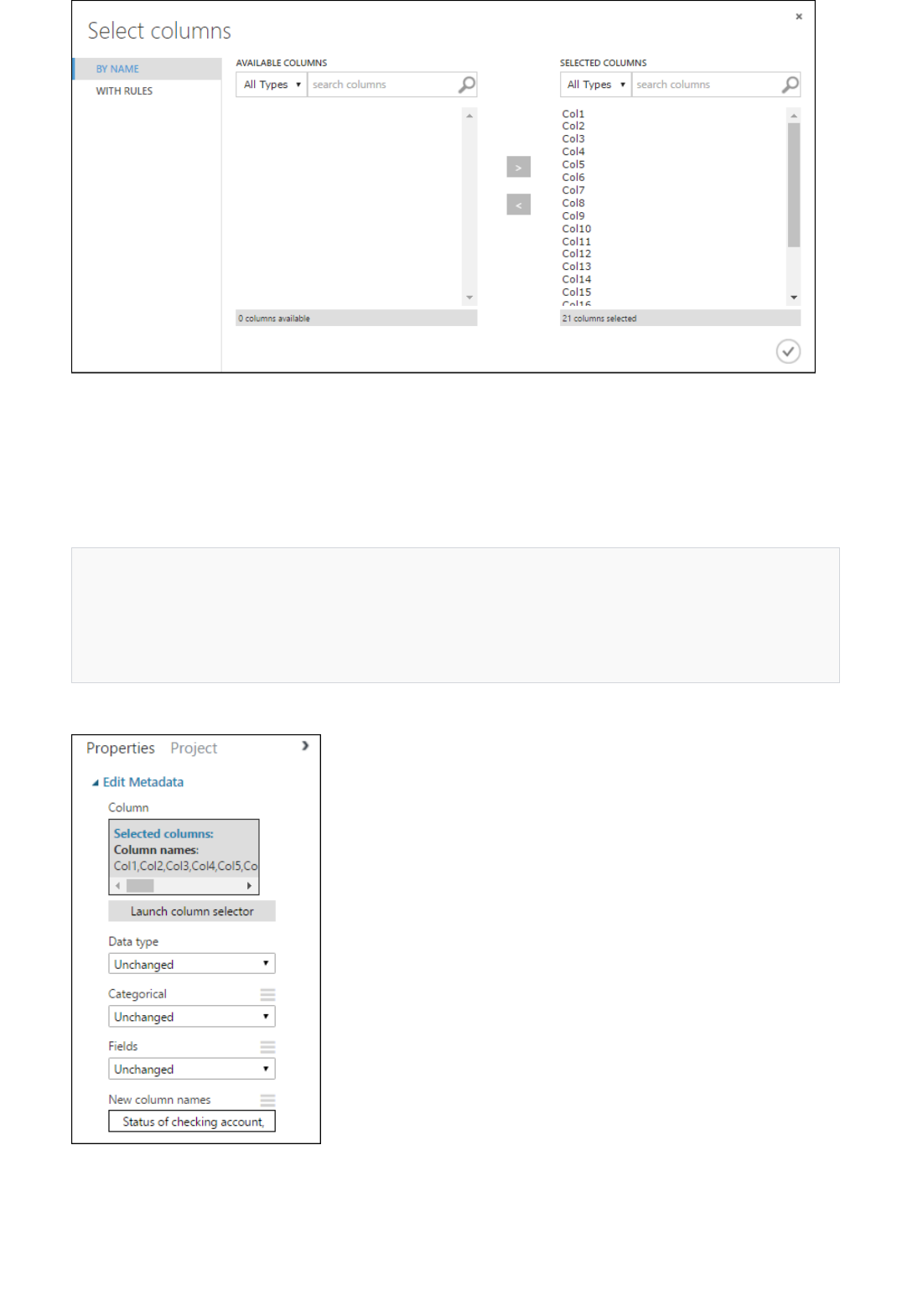

A module may have a set of parameters that you can use to configure the module's internal algorithms. When you

select a module on the canvas, the module's parameters are displayed in the Properties pane to the right of the

canvas. You can modify the parameters in that pane to tune your model.

For some help navigating through the large library of machine learning algorithms available, see How to choose

algorithms for Microsoft Azure Machine Learning.

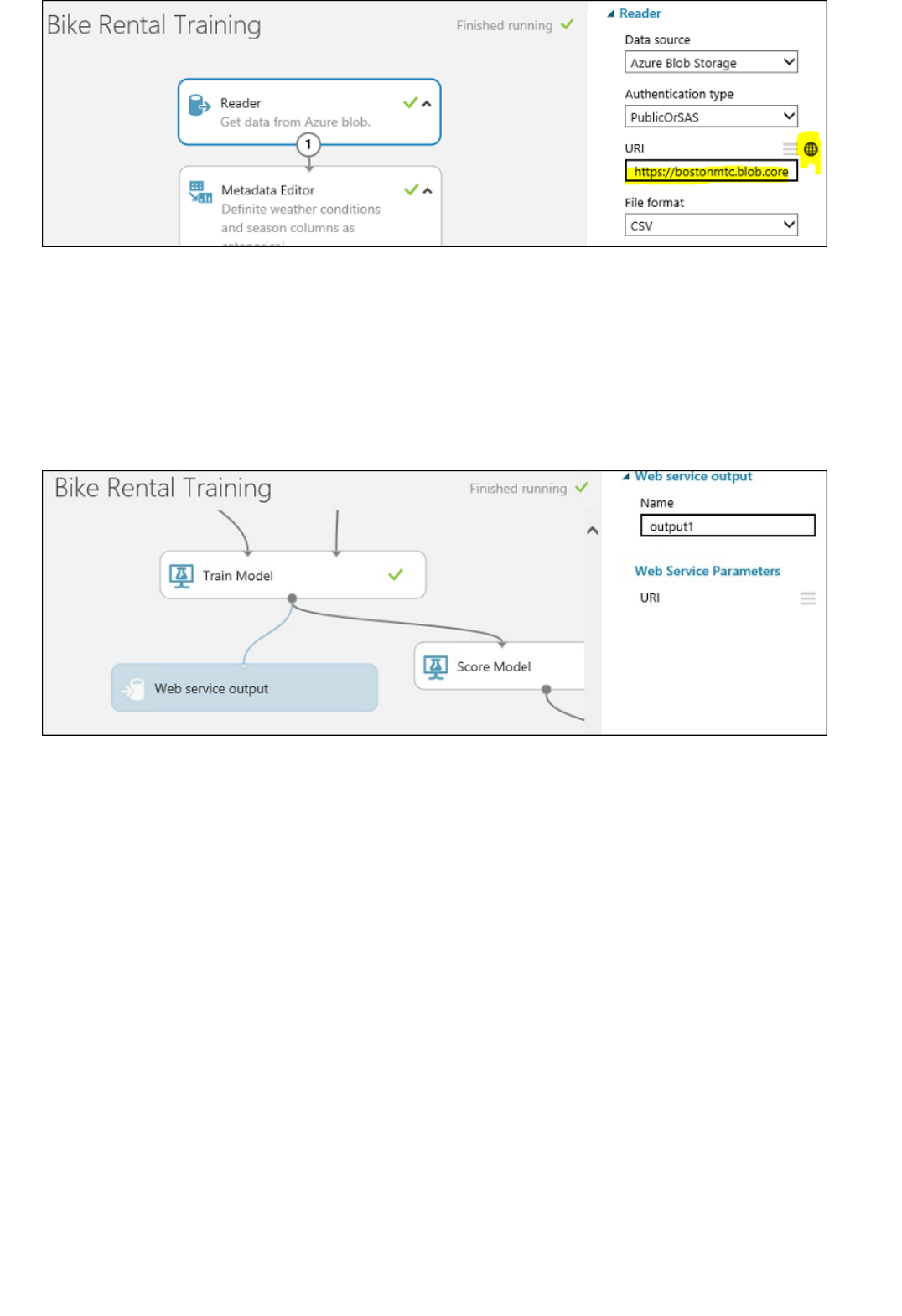

Once your predictive analytics model is ready, you can deploy it as a web service right from Machine Learning

Studio. For more details on this process, see Deploy an Azure Machine Learning web service.

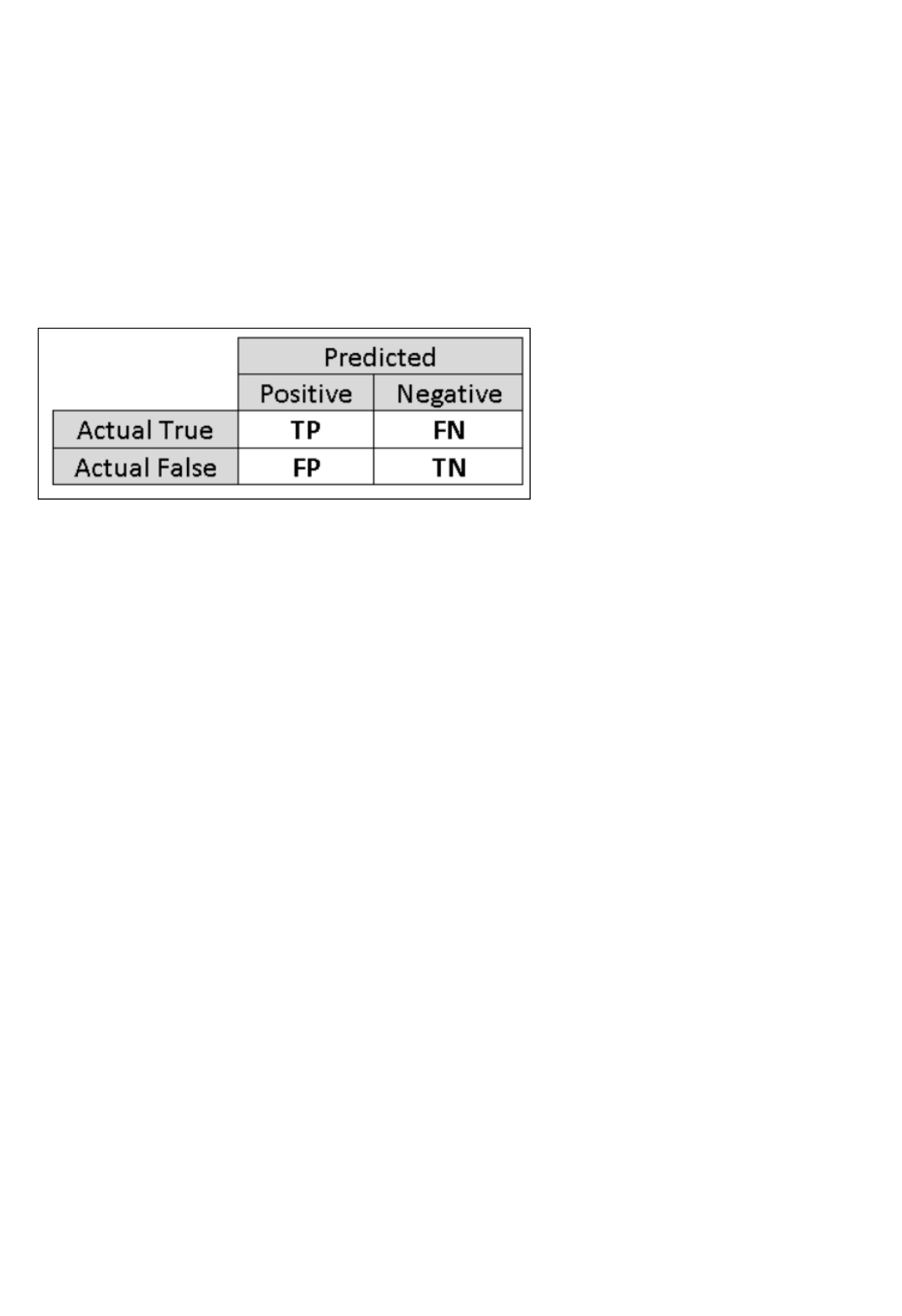

Key machine learning terms and concepts

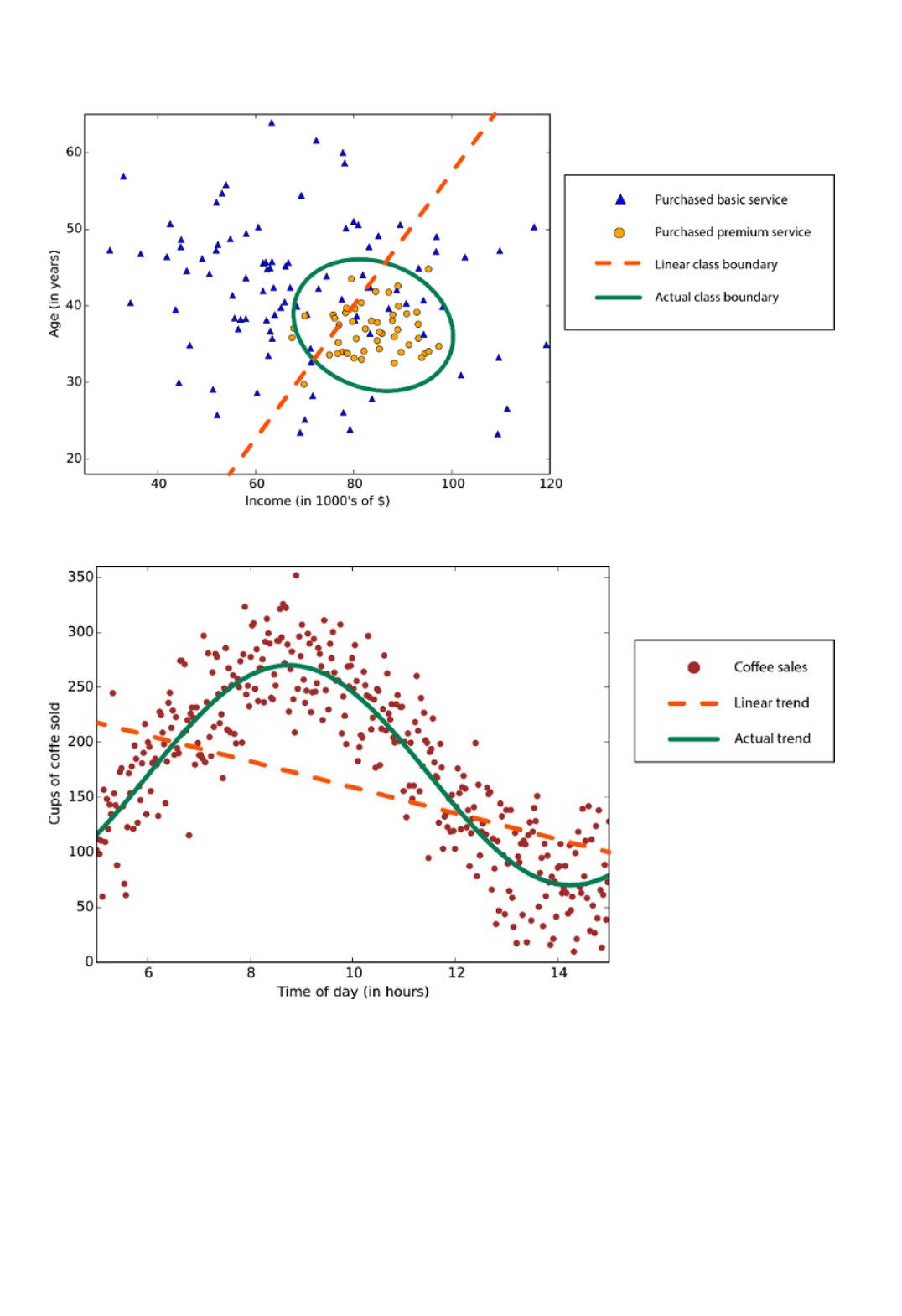

Data exploration, descriptive analytics, and predictive analyticsData exploration, descriptive analytics, and predictive analytics

Supervised and unsupervised learningSupervised and unsupervised learning

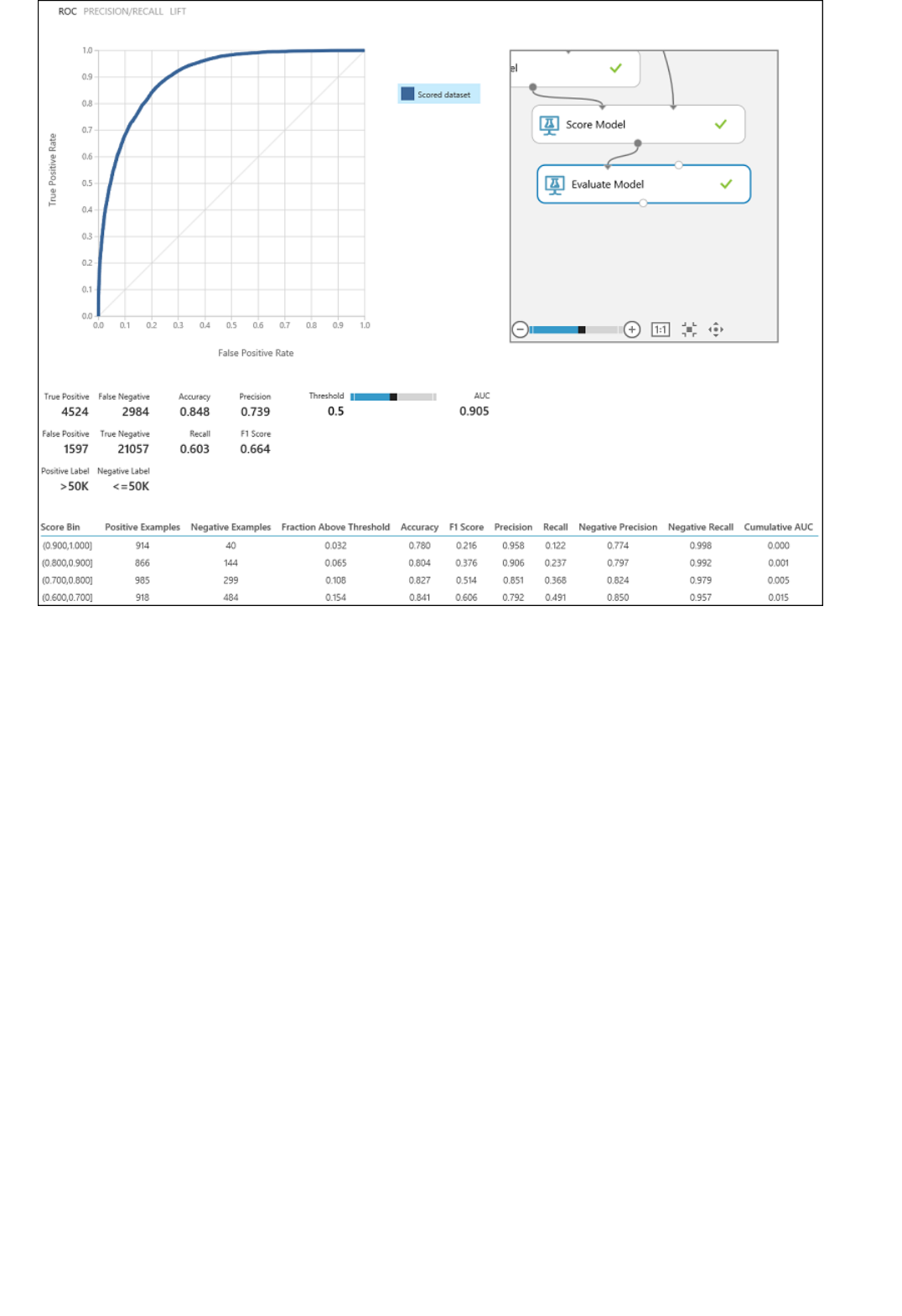

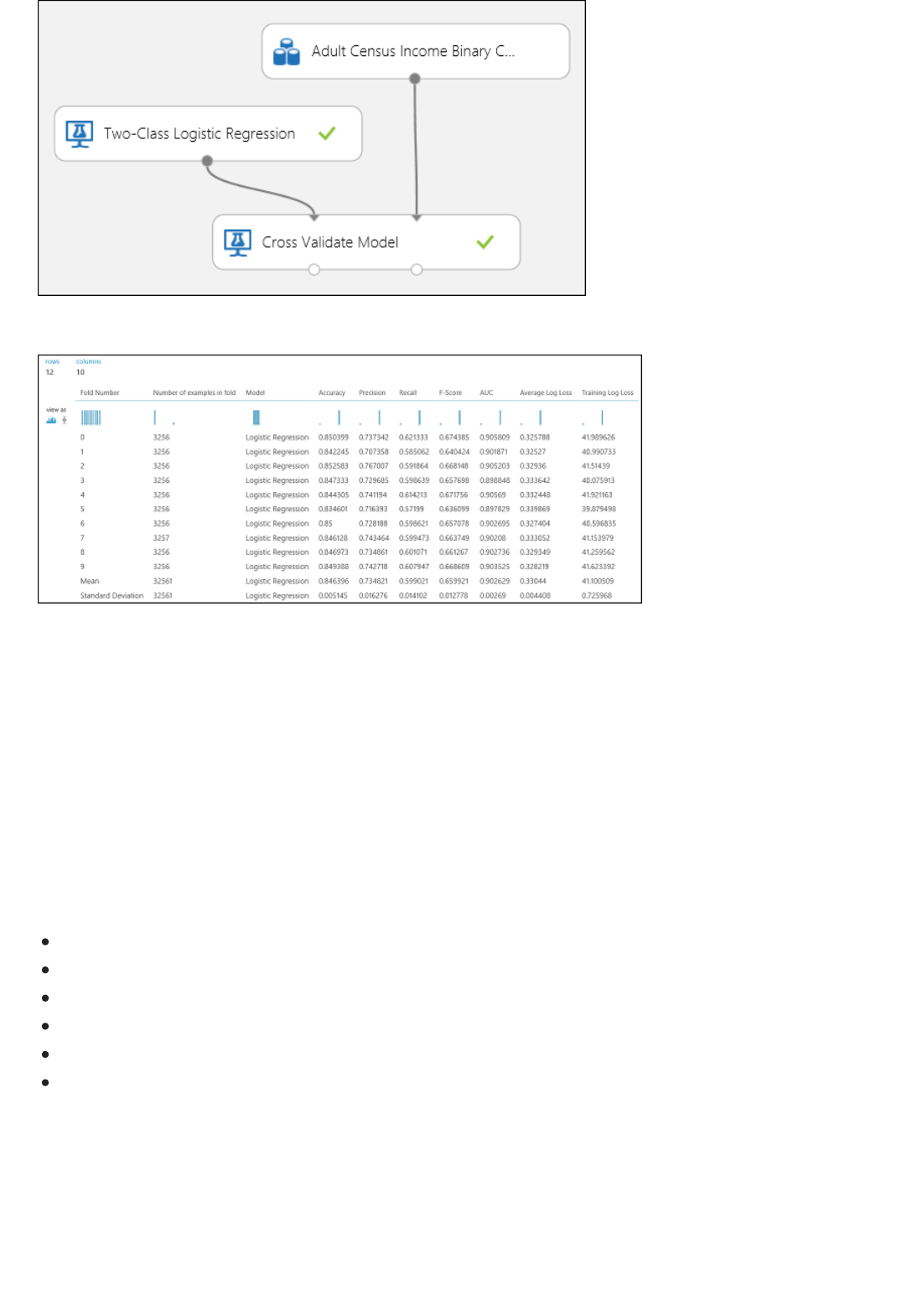

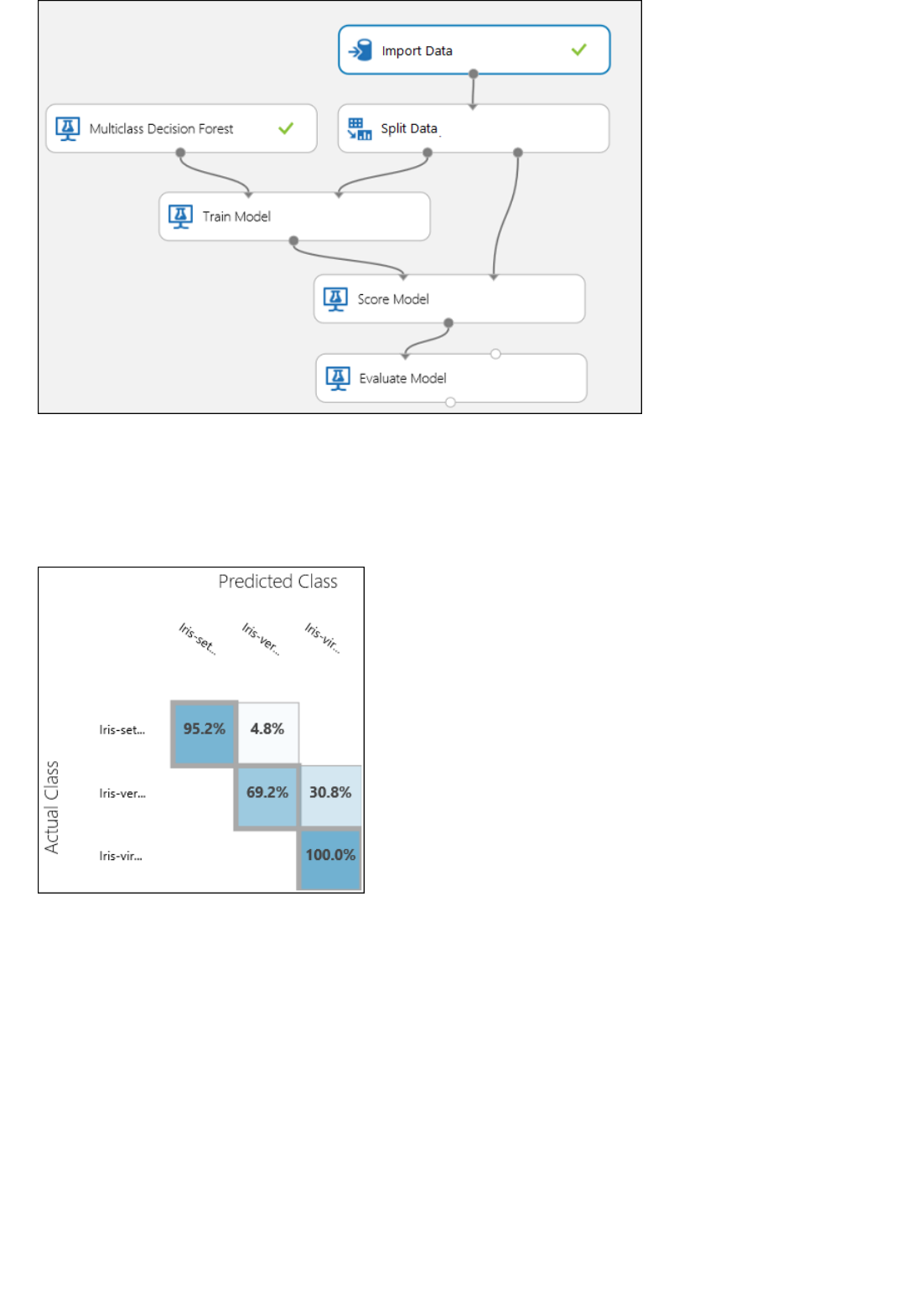

Model training and evaluationModel training and evaluation

Training dataTraining data

Evaluation dataEvaluation data

Other common machine learning terms

Machine learning terms can be confusing. Here are definitions of key terms to help you. Use comments following

to tell us about any other term you'd like defined.

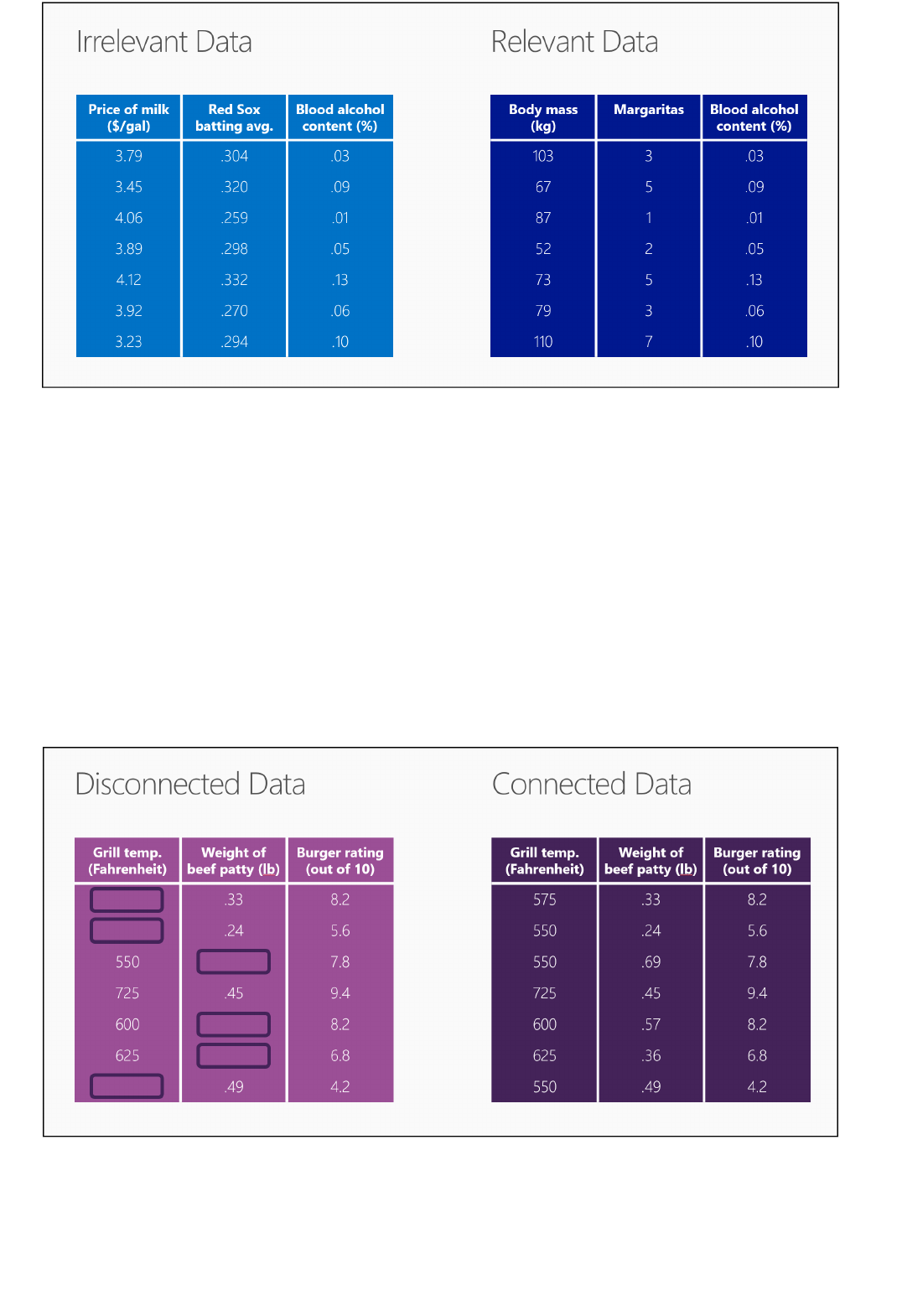

Data exploration is the process of gathering information about a large and often unstructured data set in order

to find characteristics for focused analysis.

Data mining refers to automated data exploration.

Descriptive analytics is the process of analyzing a data set in order to summarize what happened. The vast

majority of business analytics - such as sales reports, web metrics, and social networks analysis - are descriptive.

Predictive analytics is the process of building models from historical or current data in order to forecast future

outcomes.

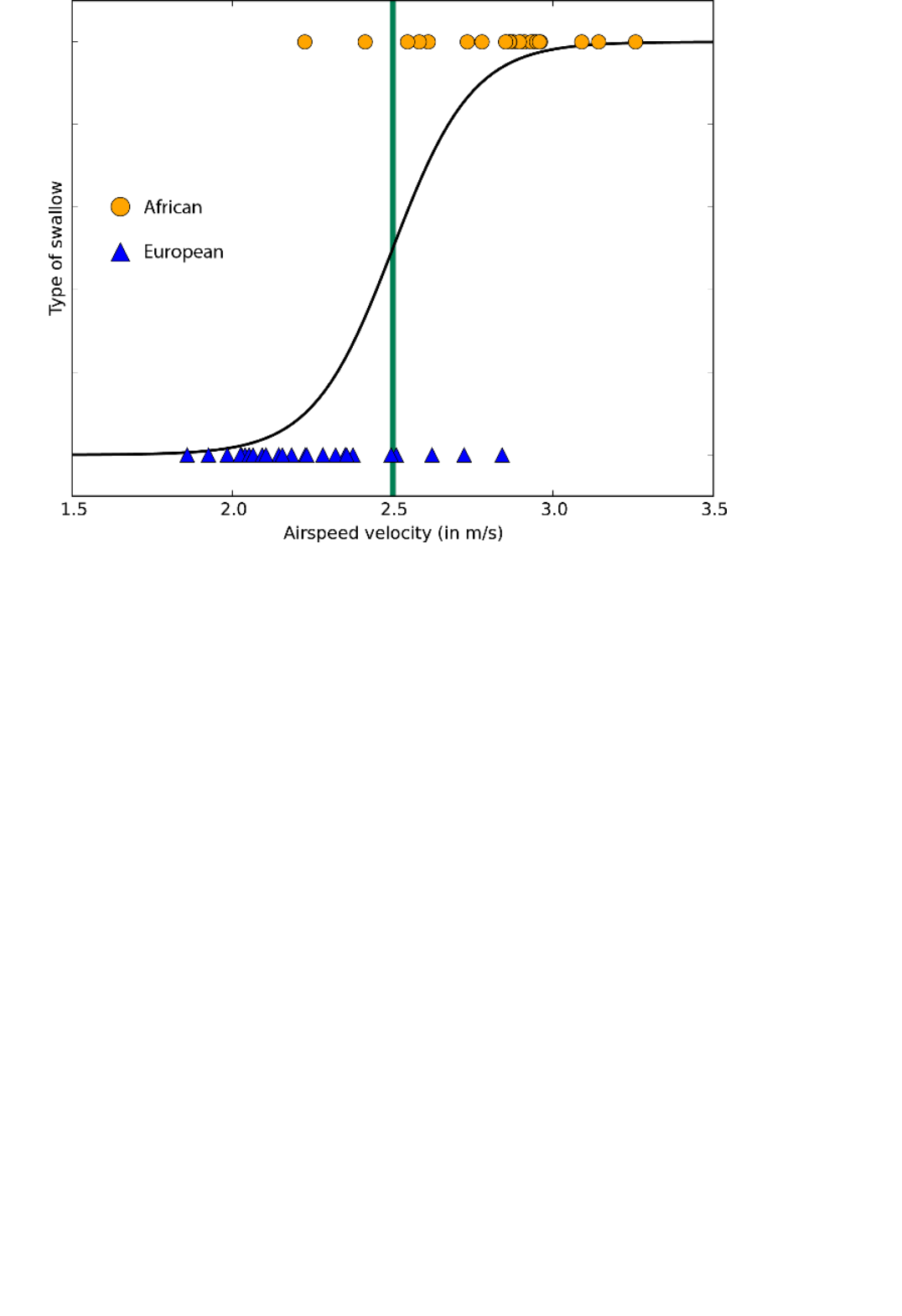

Supervised learning algorithms are trained with labeled data - in other words, data comprised of examples of the

answers wanted. For instance, a model that identifies fraudulent credit card use would be trained from a data set

with labeled data points of known fraudulent and valid charges. Most machine learning is supervised.

Unsupervised learning is used on data with no labels, and the goal is to find relationships in the data. For

instance, you might want to find groupings of customer demographics with similar buying habits.

A machine learning model is an abstraction of the question you are trying to answer or the outcome you want to

predict. Models are trained and evaluated from existing data.

When you train a model from data, you use a known data set and make adjustments to the model based on the

data characteristics to get the most accurate answer. In Azure Machine Learning, a model is built from an

algorithm module that processes training data and functional modules, such as a scoring module.

In supervised learning, if you're training a fraud detection model, you use a set of transactions that are labeled as

either fraudulent or valid. You split your data set randomly, and use part to train the model and part to test or

evaluate the model.

Once you have a trained model, evaluate the model using the remaining test data. You use data you already know

the outcomes for, so that you can tell whether your model predicts accurately.

algorithm: A self-contained set of rules used to solve problems through data processing, math, or automated

reasoning.

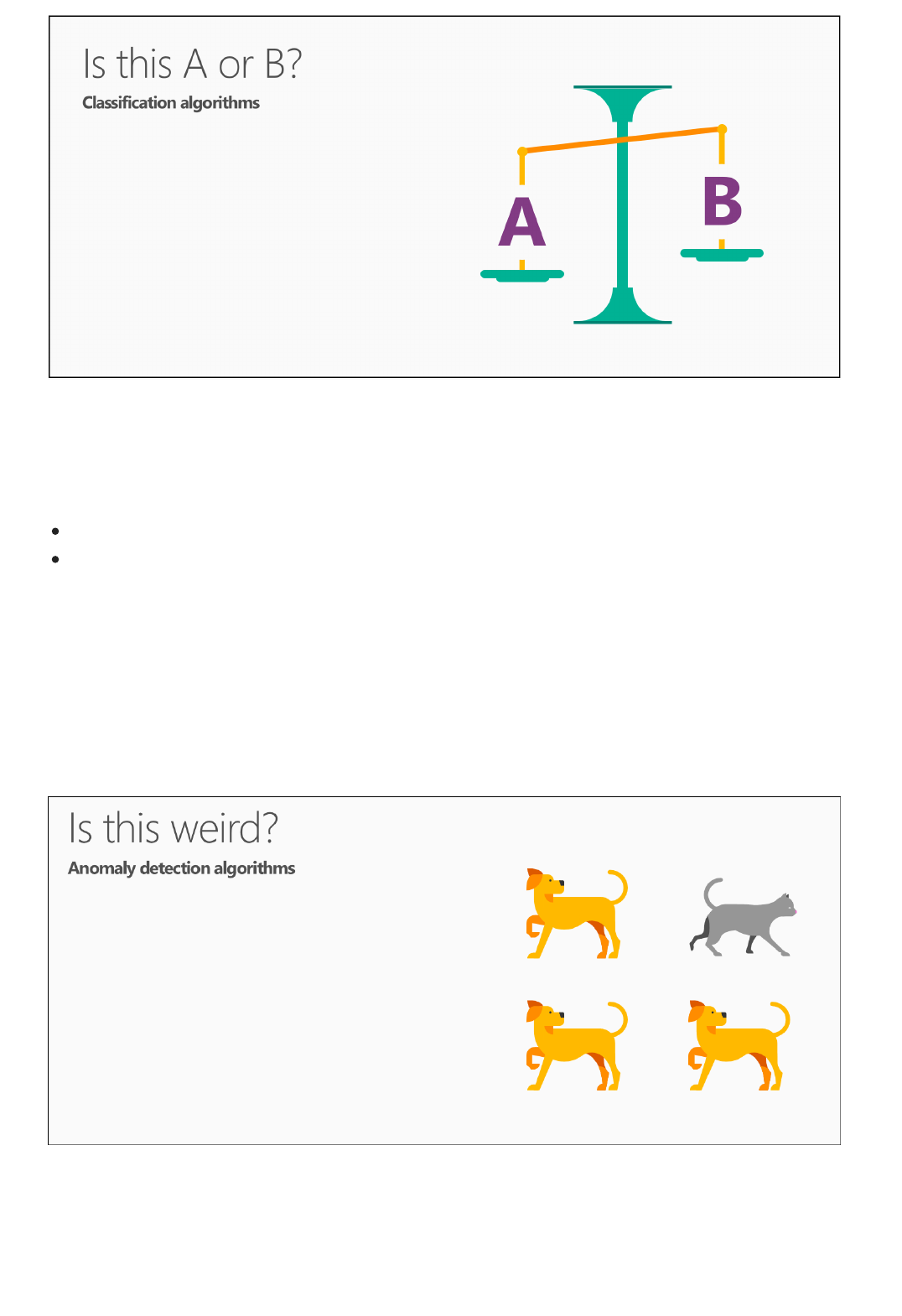

anomaly detection: A model that flags unusual events or values and helps you discover problems. For

example, credit card fraud detection looks for unusual purchases.

categorical data: Data that is organized by categories and that can be divided into groups. For example a

categorical data set for autos could specify year, make, model, and price.

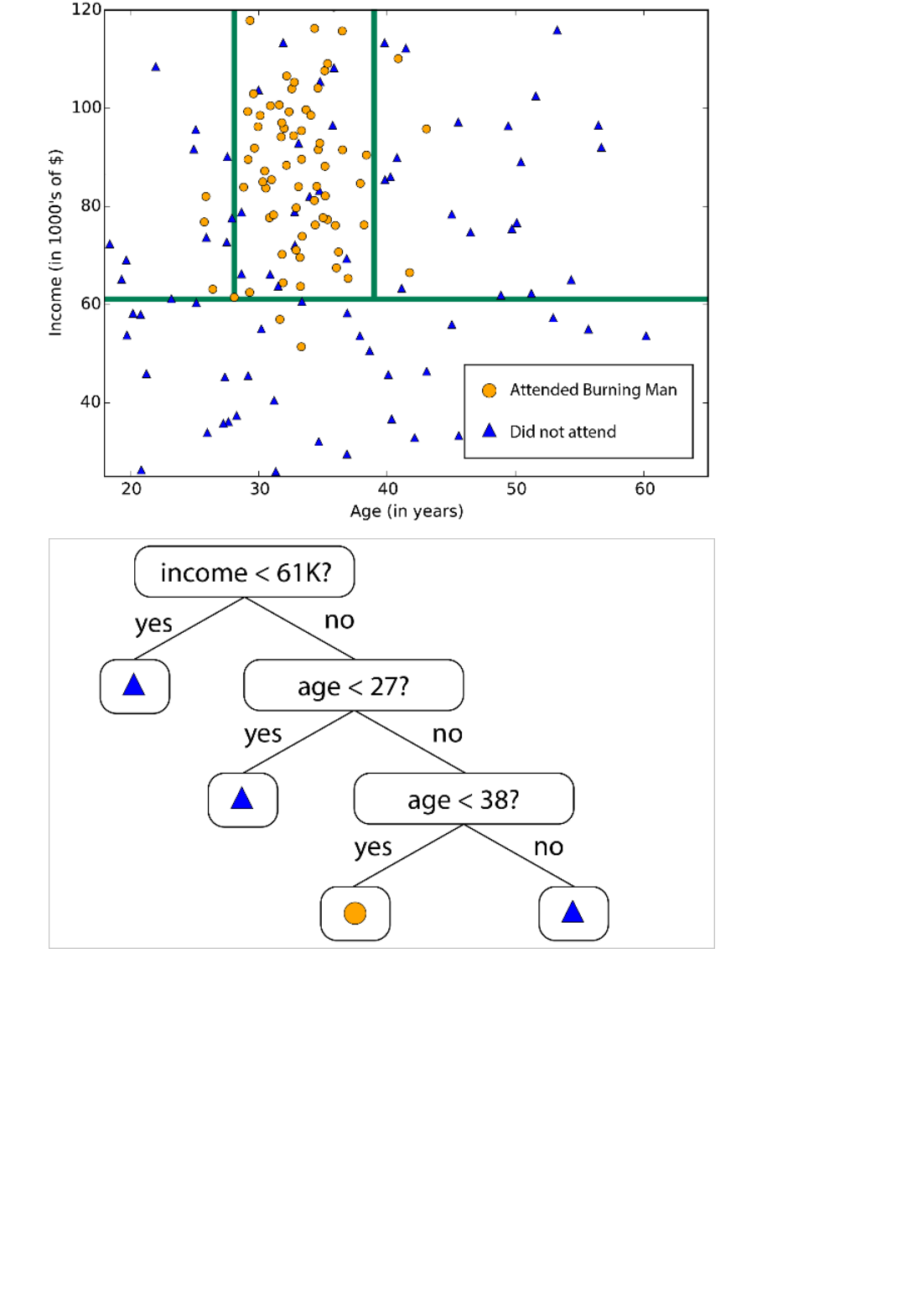

classification: A model for organizing data points into categories based on a data set for which category

groupings are already known.

feature engineering: The process of extracting or selecting features related to a data set in order to enhance

the data set and improve outcomes. For instance, airfare data could be enhanced by days of the week and

holidays. See Feature selection and engineering in Azure Machine Learning.

module: A functional part in a Machine Learning Studio model, such as the Enter Data module that enables

Next steps

entering and editing small data sets. An algorithm is also a type of module in Machine Learning Studio.

model: A supervised learning model is the product of a machine learning experiment comprised of training

data, an algorithm module, and functional modules, such as a Score Model module.

numerical data: Data that has meaning as measurements (continuous data) or counts (discrete data). Also

referred to as quantitative data.

partition: The method by which you divide data into samples. See Partition and Sample for more information.

prediction: A prediction is a forecast of a value or values from a machine learning model. You might also see

the term "predicted score." However, predicted scores are not the final output of a model. An evaluation of the

model follows the score.

regression: A model for predicting a value based on independent variables, such as predicting the price of a car

based on its year and make.

score: A predicted value generated from a trained classification or regression model, using the Score Model

module in Machine Learning Studio. Classification models also return a score for the probability of the

predicted value. Once you've generated scores from a model, you can evaluate the model's accuracy using the

Evaluate Model module.

sample: A part of a data set intended to be representative of the whole. Samples can be selected randomly or

based on specific features of the data set.

You can learn the basics of predictive analytics and machine learning using a step-by-step tutorial and by building

on samples.

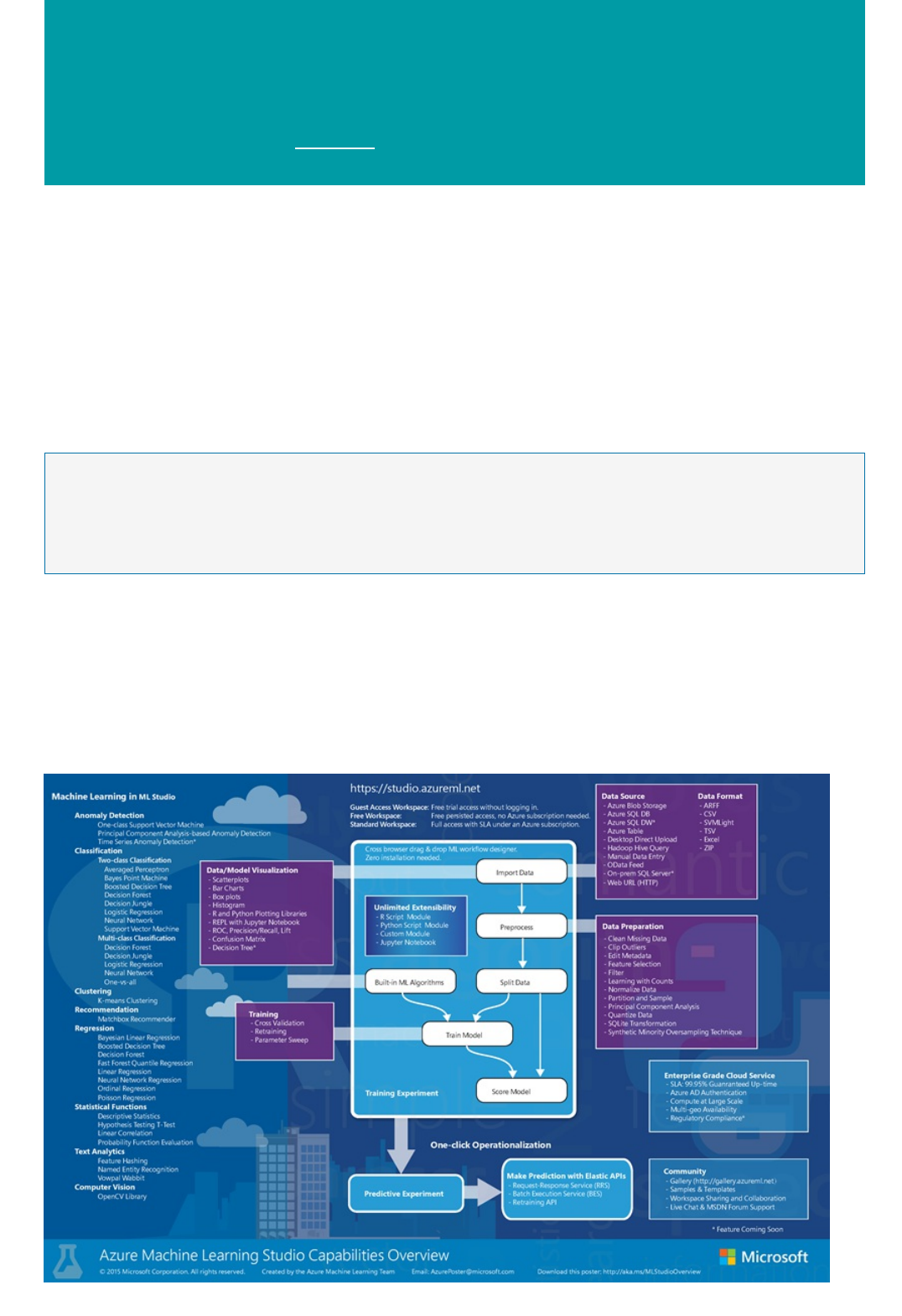

Overview diagram of Azure Machine Learning Studio

capabilities

3/21/2018 • 1 min to read • Edit Online

NOTENOTE

Download the Machine Learning Studio overview diagram

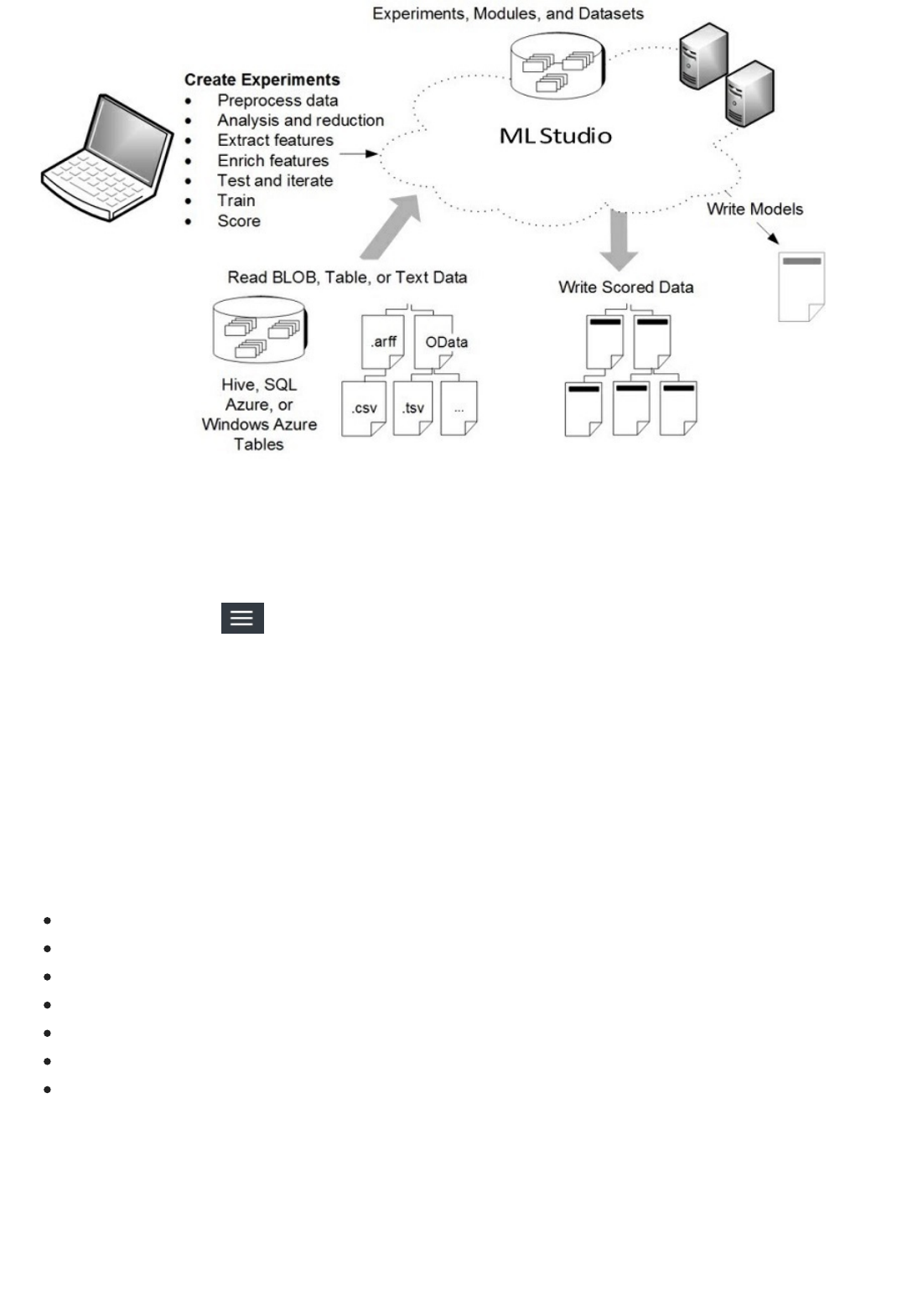

The Microsoft Azure Machine Learning Studio Capabilities Overview diagram gives you a high-level

overview of how you can use Machine Learning Studio to develop a predictive analytics model and operationalize

it in the Azure cloud.

Azure Machine Learning Studio has available a large number of machine learning algorithms, along with modules

that help with data input, output, preparation, and visualization. Using these components you can develop a

predictive analytics experiment, iterate on it, and use it to train your model. Then with one click you can

operationalize your model in the Azure cloud so that it can be used to score new data.

This diagram demonstrates how all those pieces fit together.

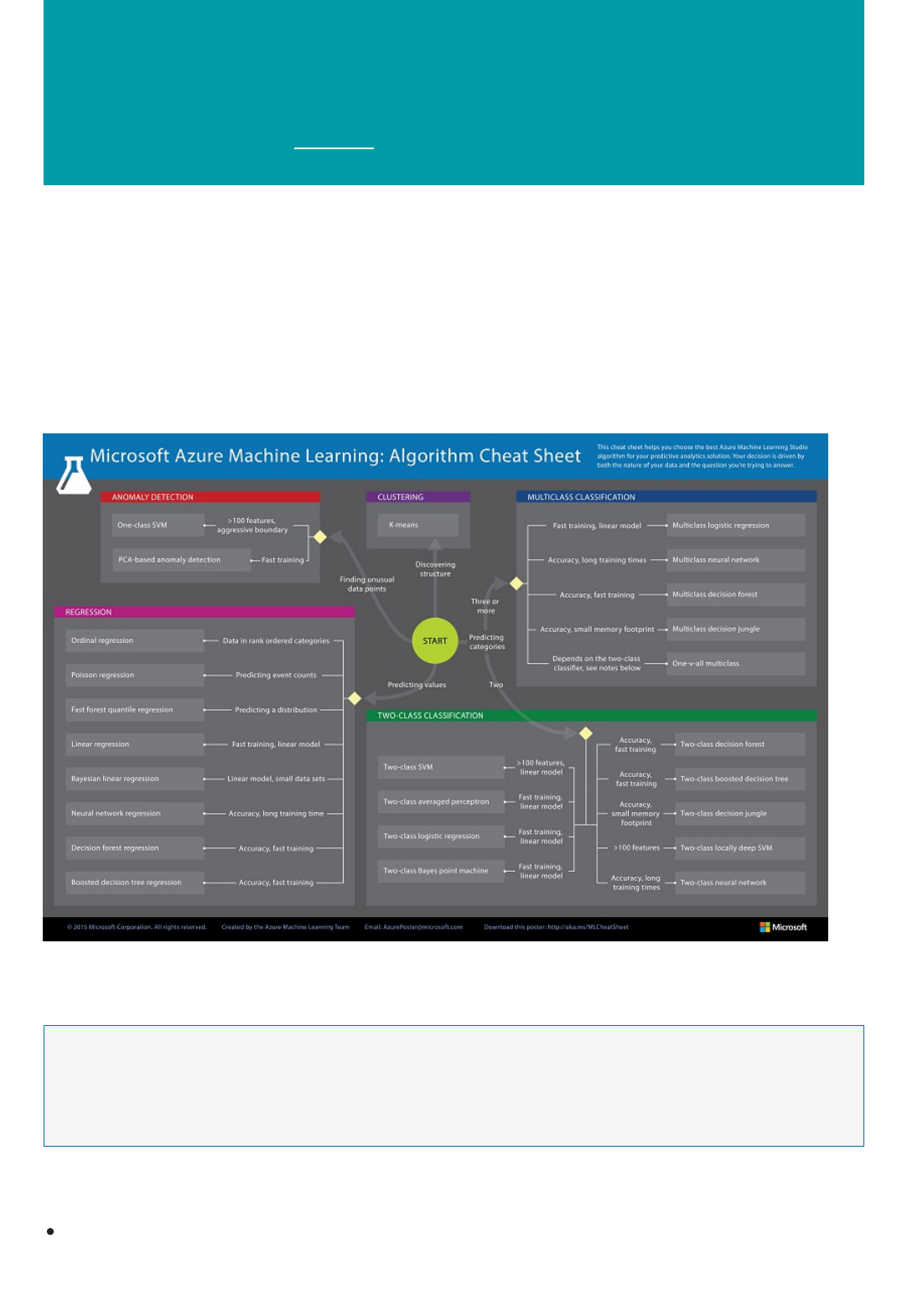

See Machine learning algorithm cheat sheet for Microsoft Azure Machine Learning Studio for additional help in navigating

through and choosing the machine learning algorithms available in Machine Learning Studio.

Download the Microsoft Azure Machine Learning Studio Capabilities Overview diagram and get a high-

level view of the capabilities of Machine Learning Studio. To keep it nearby, you can print the diagram in tabloid

size (11 x 17 in.).

Download the diagram here: Microsoft Azure Machine Learning Studio Capabilities Overview

More help with Machine Learning Studio

NOTENOTE

For an overview of Microsoft Azure Machine Learning, see Introduction to machine learning on Microsoft

Azure

For an overview of Machine Learning Studio, see What is Azure Machine Learning Studio?.

For a detailed discussion of the machine learning algorithms available in Machine Learning Studio, see How to

choose algorithms for Microsoft Azure Machine Learning.

You can try Azure Machine Learning for free. No credit card or Azure subscription is required. Get started now.

Downloadable Infographic: Machine learning basics

with algorithm examples

3/21/2018 • 1 min to read • Edit Online

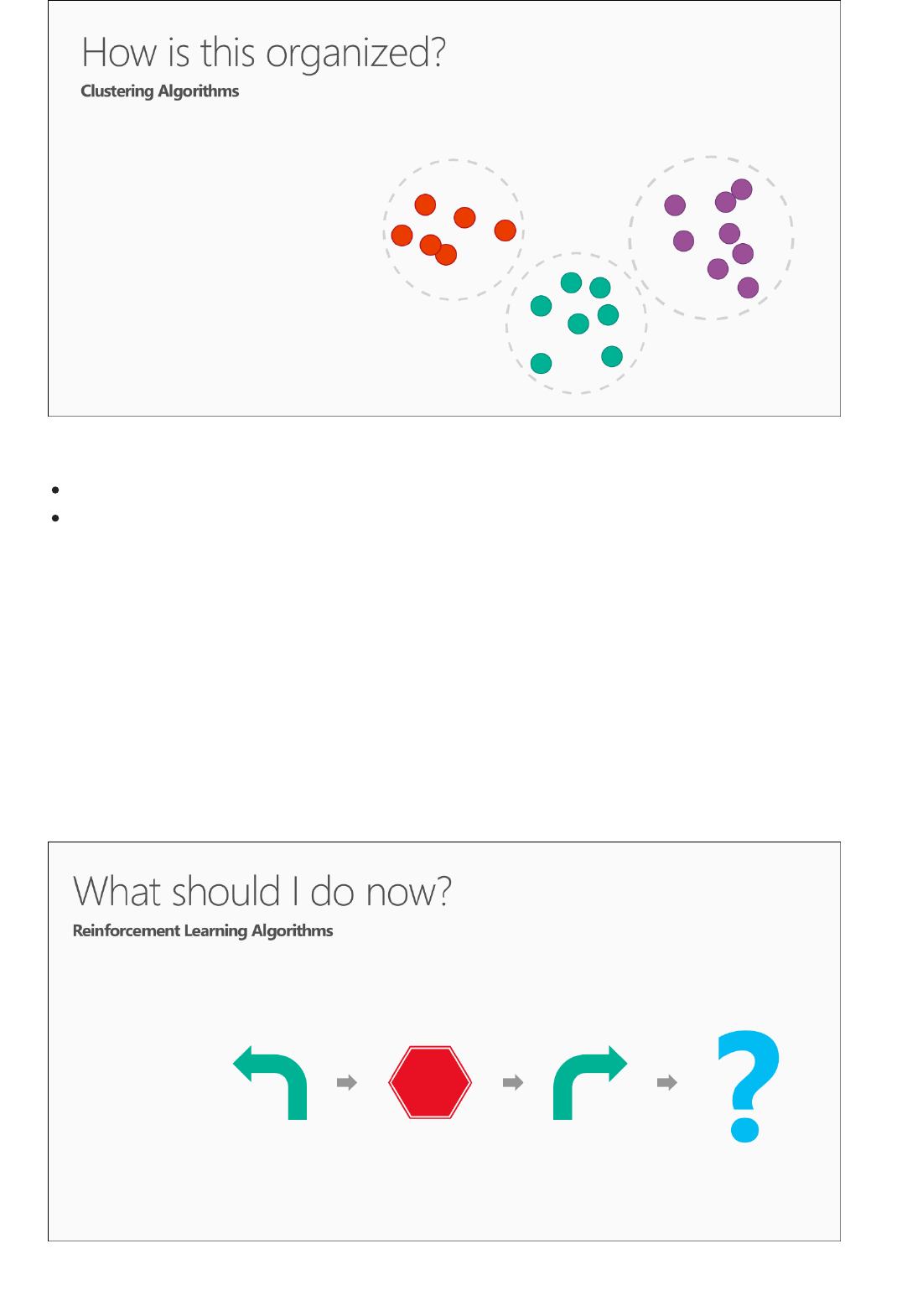

Popular algorithms in Machine Learning Studio

Download the infographic with algorithm examples

More help with algorithms for beginners and advanced users

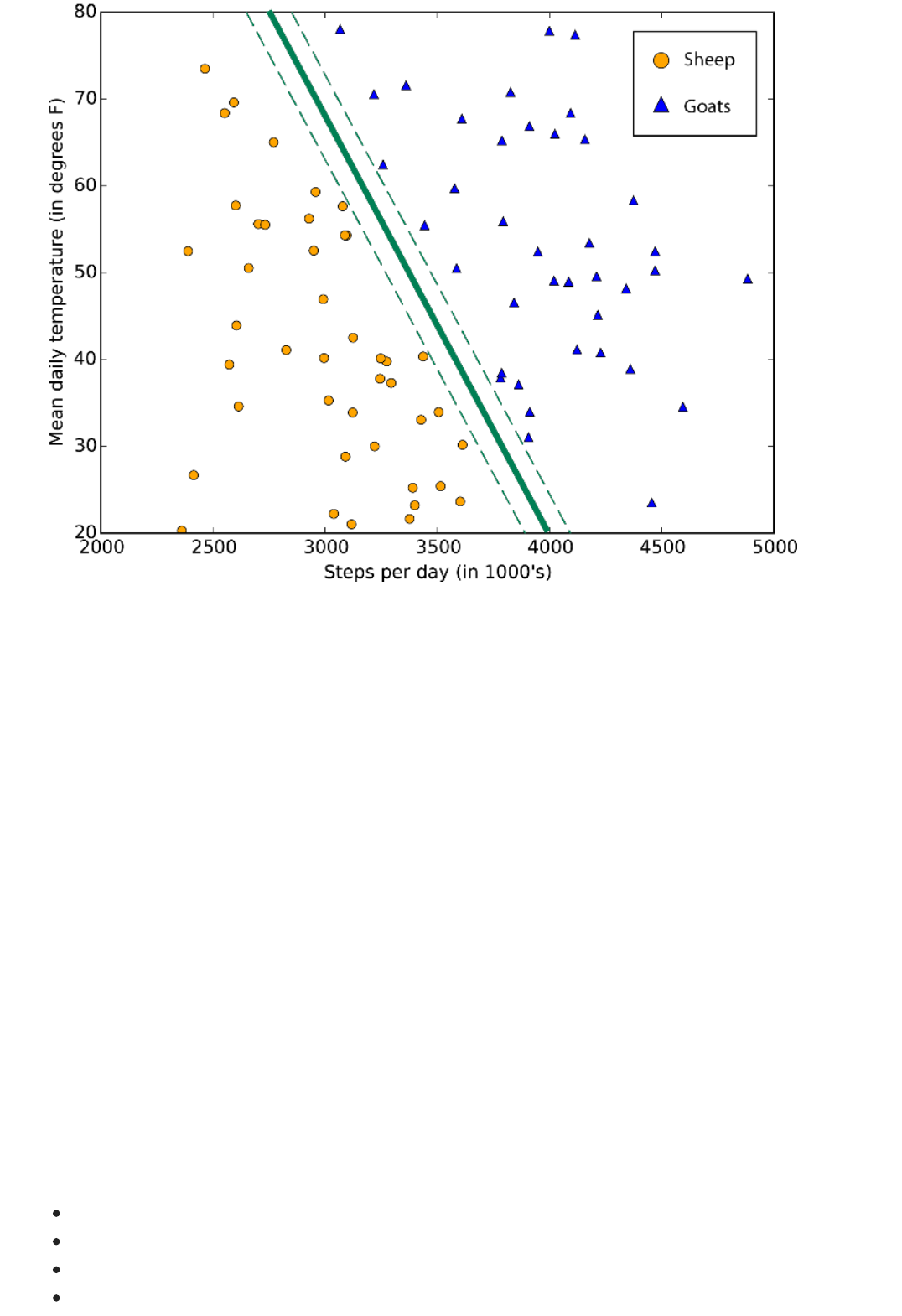

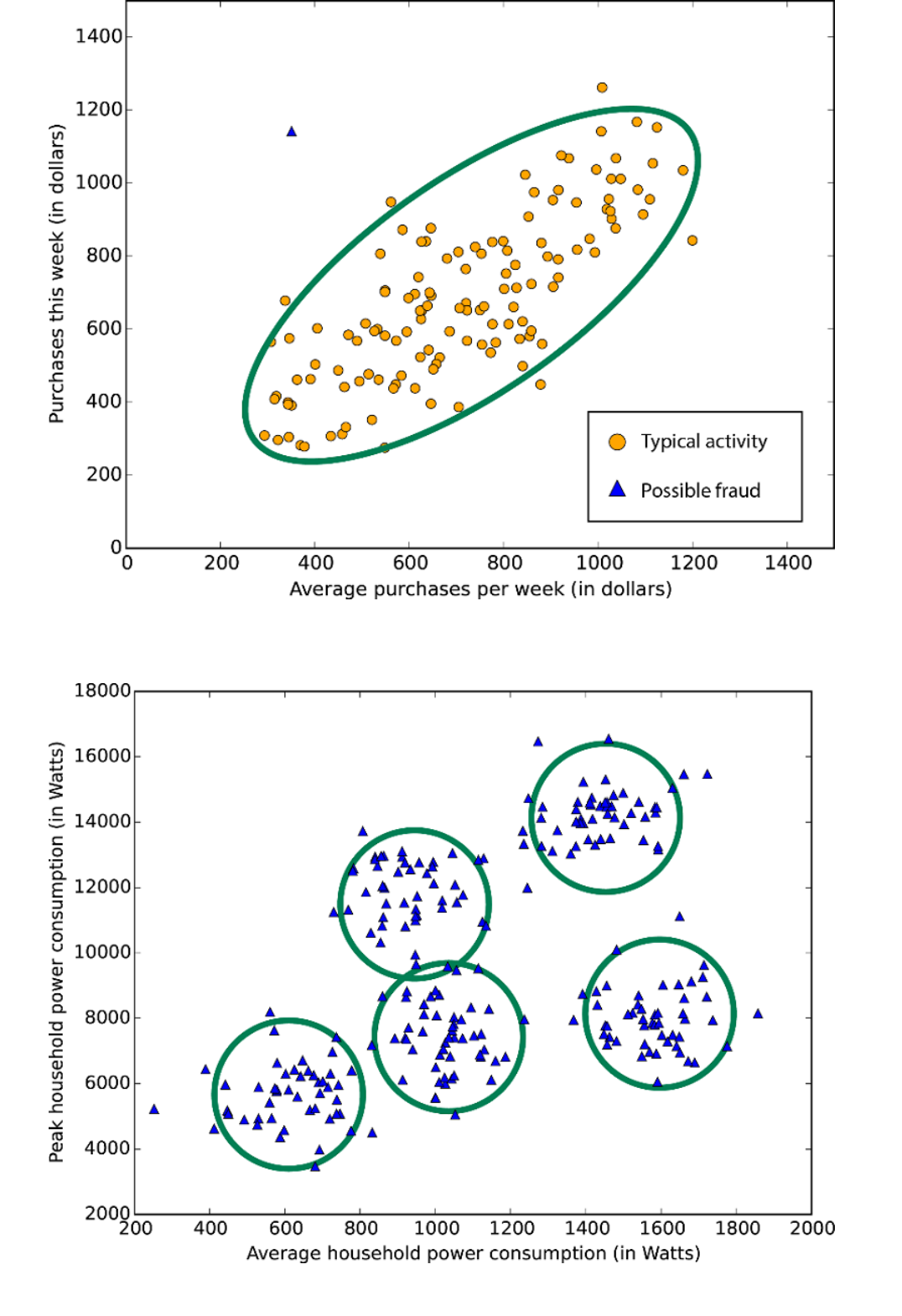

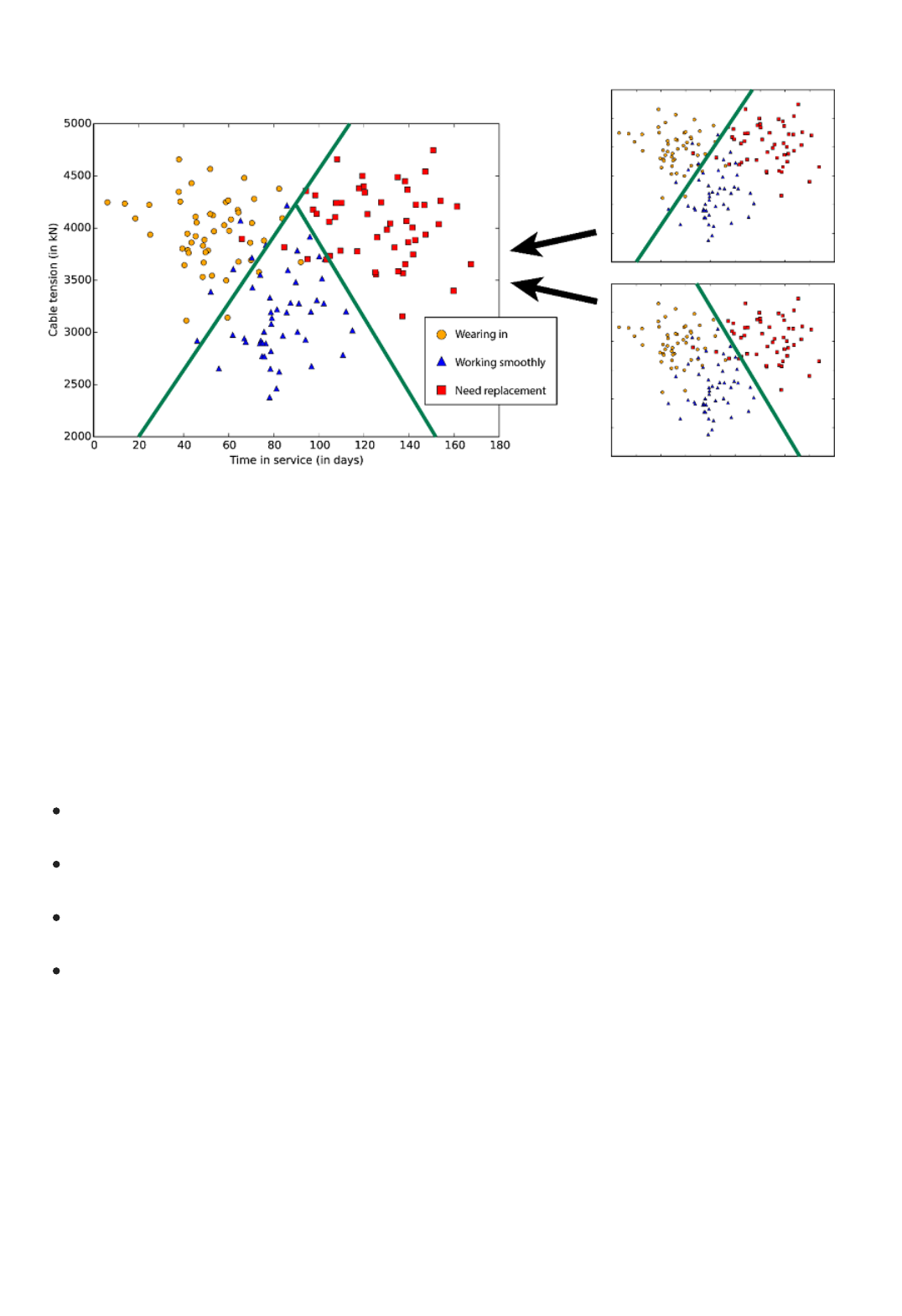

Download this easy-to-understand infographic overview of machine learning basics to learn about popular

algorithms used to answer common machine learning questions. Algorithm examples help the machine learning

beginner understand which algorithms to use and what they're used for.

Azure Machine Learning Studio comes with a large library of algorithms for predictive analytics. The infographic

identifies four popular families of algorithms - regression, anomaly detection, clustering, and classification - and

provides links to working examples in the Azure AI Gallery. The Gallery contains example experiments and

tutorials that demonstrate how these algorithms can be applied in many real-world solutions.

Download: Infographic of machine learning basics with links to algorithm examples (PDF)

For a deeper discussion of the different types of machine learning algorithms, how they're used, and how to

choose the right one for your solution, see How to choose algorithms for Microsoft Azure Machine Learning.

For a list by category of all the machine learning algorithms available in Machine Learning Studio, see Initialize

Model in the Machine Learning Studio Algorithm and Module Help.

For a complete alphabetical list of algorithms and modules in Machine Learning Studio, see A-Z list of Machine

Learning Studio modules in Machine Learning Studio Algorithm and Module Help.

NOTENOTE

To download and print a diagram that gives an overview of the capabilities of Machine Learning Studio, see

Overview diagram of Azure Machine Learning Studio capabilities.

For an overview of the Azure AI Gallery and the many community-generated resources available there, see

Share and discover resources in the Azure AI Gallery.

You can try Azure Machine Learning for free. No credit card or Azure subscription is required. Get started now.

Azure Machine Learning frequently asked questions:

Billing, capabilities, limitations, and support

4/25/2018 • 29 min to read • Edit Online

General questions

NOTENOTE

Here are some frequently asked questions (FAQs) and corresponding answers about Azure Machine Learning, a

cloud service for developing predictive models and operationalizing solutions through web services. These FAQs

provide questions about how to use the service, which includes the billing model, capabilities, limitations, and

support.

Have a question you can't find here?

Azure Machine Learning has a forum on MSDN where members of the data science community can ask questions

about Azure Machine Learning. The Azure Machine Learning team monitors the forum. Go to the Azure Machine

Learning Forum to search for answers or to post a new question of your own.

What is Azure Machine Learning?

Azure Machine Learning is a fully managed service that you can use to create, test, operate, and manage predictive

analytic solutions in the cloud. With only a browser, you can sign in, upload data, and immediately start machine-

learning experiments. Drag-and-drop predictive modeling, a large pallet of modules, and a library of starting

templates make common machine-learning tasks simple and quick. For more information, see the Azure Machine

Learning service overview. For an introduction to machine learning that explains key terminology and concepts,

see Introduction to Azure Machine Learning.

You can try Azure Machine Learning for free. No credit card or Azure subscription is required. Get started now.

What is Machine Learning Studio?

Machine Learning Studio is a workbench environment that you access by using a web browser. Machine Learning

Studio hosts a pallet of modules in a visual composition interface that helps you build an end-to-end, data-science

workflow in the form of an experiment.

For more information about Machine Learning Studio, see What is Machine Learning Studio?

What is the Machine Learning API service?

The Machine Learning API service enables you to deploy predictive models, like those that are built into Machine

Learning Studio, as scalable, fault-tolerant, web services. The web services that the Machine Learning API service

creates are REST APIs that provide an interface for communication between external applications and your

predictive analytics models.

For more information, see How to consume an Azure Machine Learning Web service.

Where are my Classic web services listed? Where are my New (Azure Resource Manager-based) web

services listed?

Web services created using the Classic deployment model and web services created using the New Azure

Resource Manager deployment model are listed in the Microsoft Azure Machine Learning Web Services portal.

Azure Machine Learning questions

Machine Learning Studio questions

Import and export data for Machine LearningImport and export data for Machine Learning

How large can the data set be for my modules?How large can the data set be for my modules?

Classic web services are also listed in Machine Learning Studio on the Web services tab.

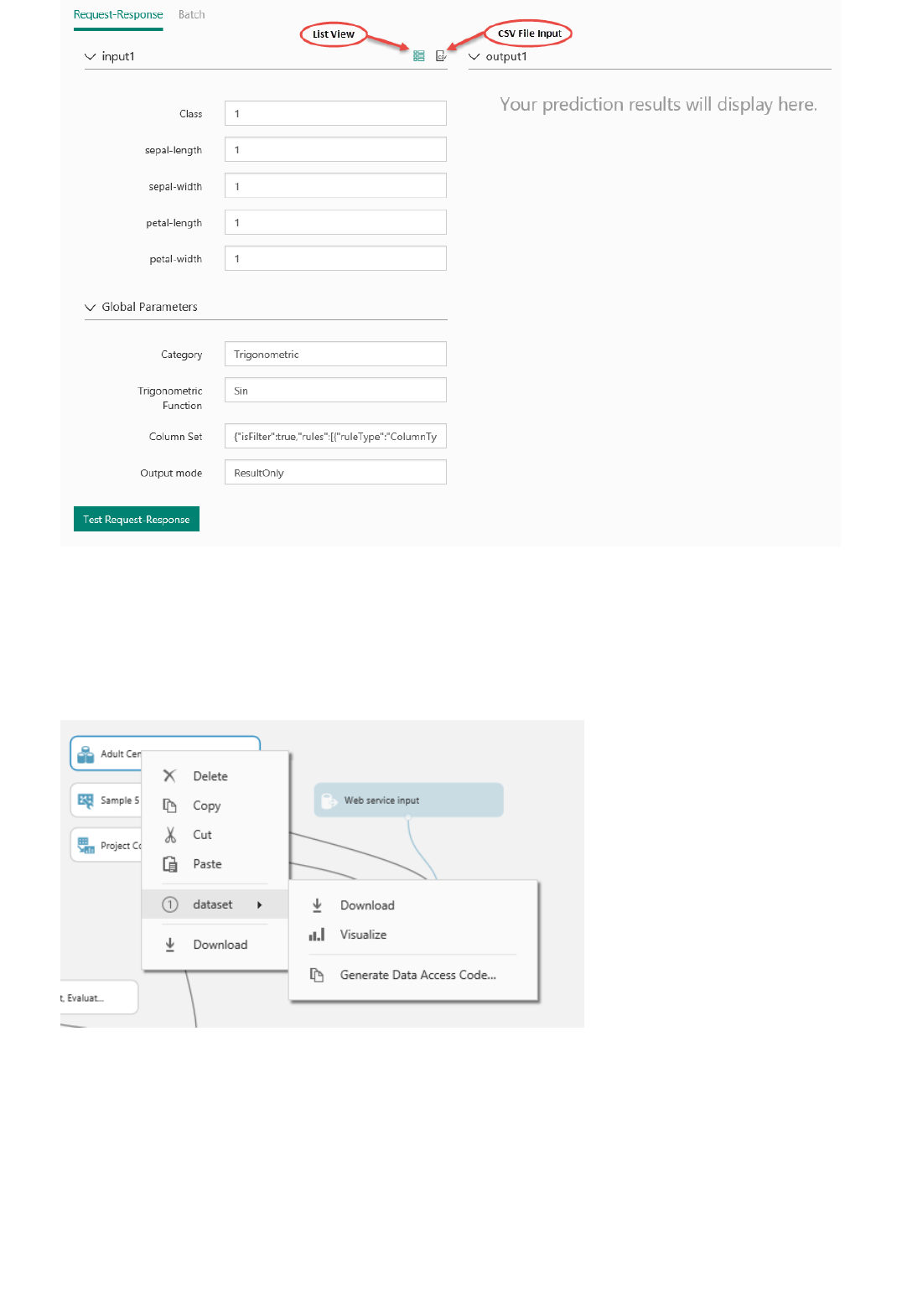

What are Azure Machine Learning web services?

Machine Learning web services provide an interface between an application and a Machine Learning workflow

scoring model. An external application can use Azure Machine Learning to communicate with a Machine Learning

workflow scoring model in real time. A call to a Machine Learning web service returns prediction results to an

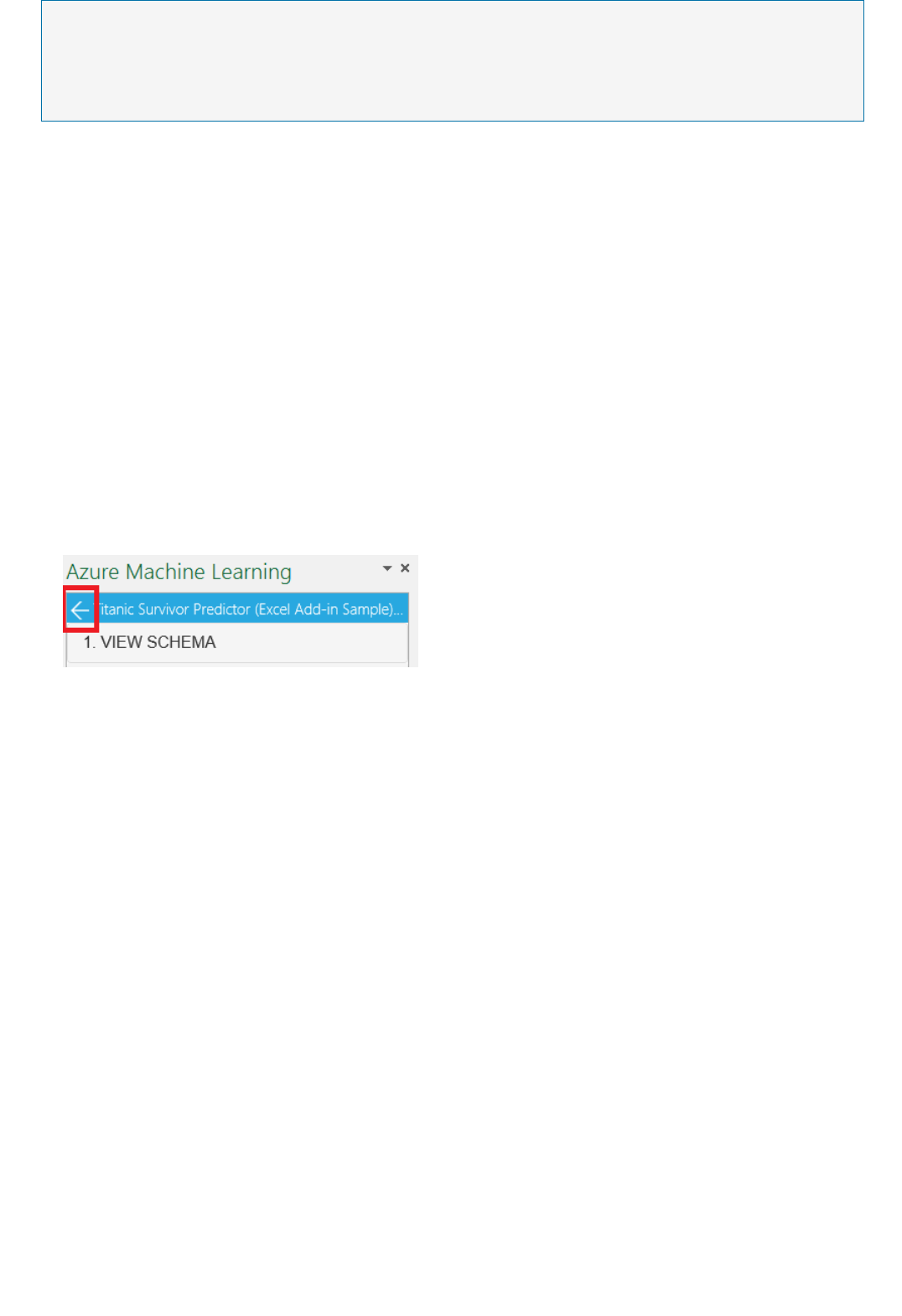

external application. To make a call to a web service, you pass an API key that was created when you deployed the

web service. A Machine Learning web service is based on REST, a popular architecture choice for web

programming projects.

Azure Machine Learning has two types of web services:

Request-Response Service (RRS): A low latency, highly scalable service that provides an interface to the

stateless models created and deployed by using Machine Learning Studio.

Batch Execution Service (BES): An asynchronous service that scores a batch for data records.

There are several ways to consume the REST API and access the web service. For example, you can write an

application in C#, R, or Python by using the sample code that's generated for you when you deployed the web

service.

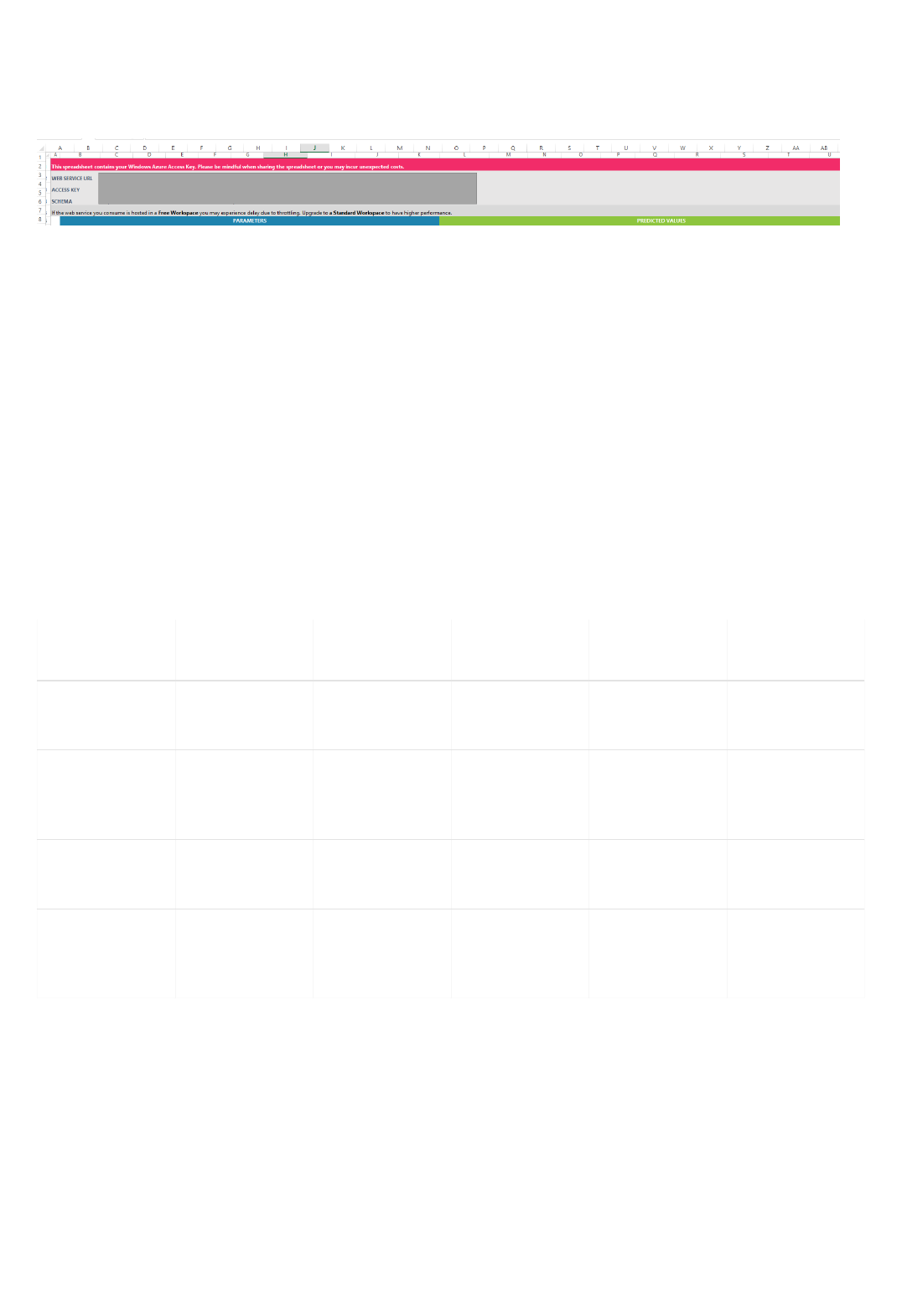

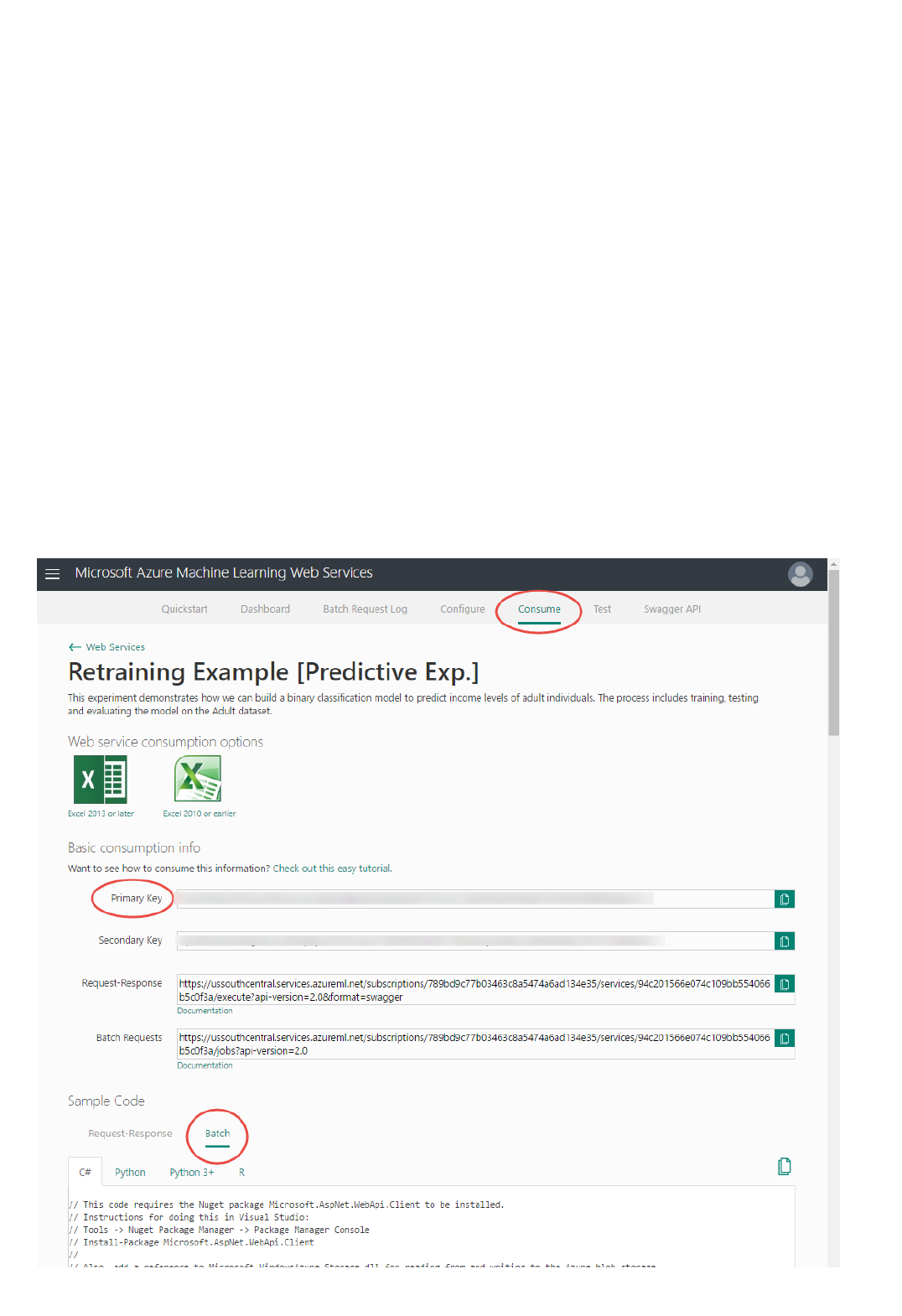

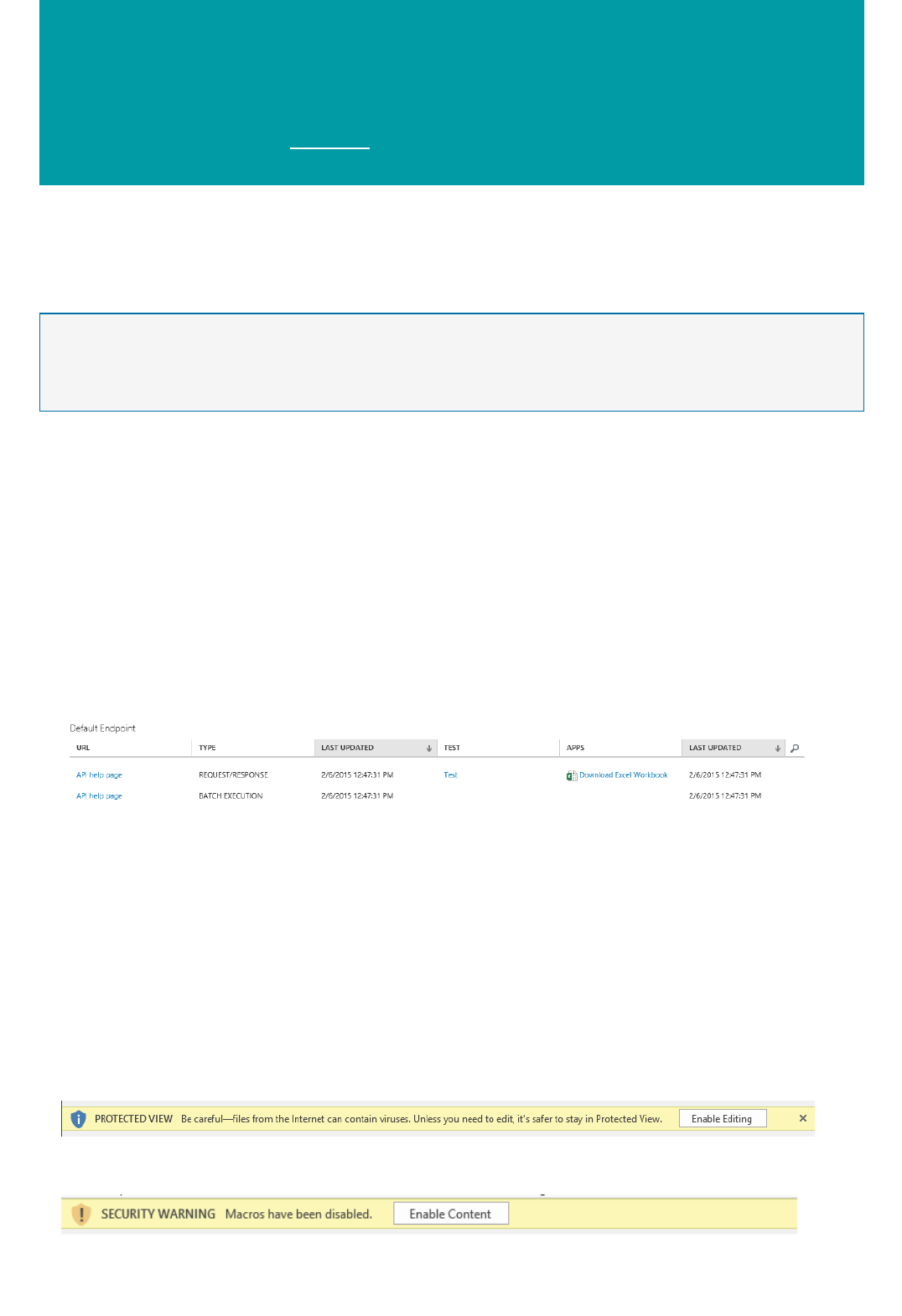

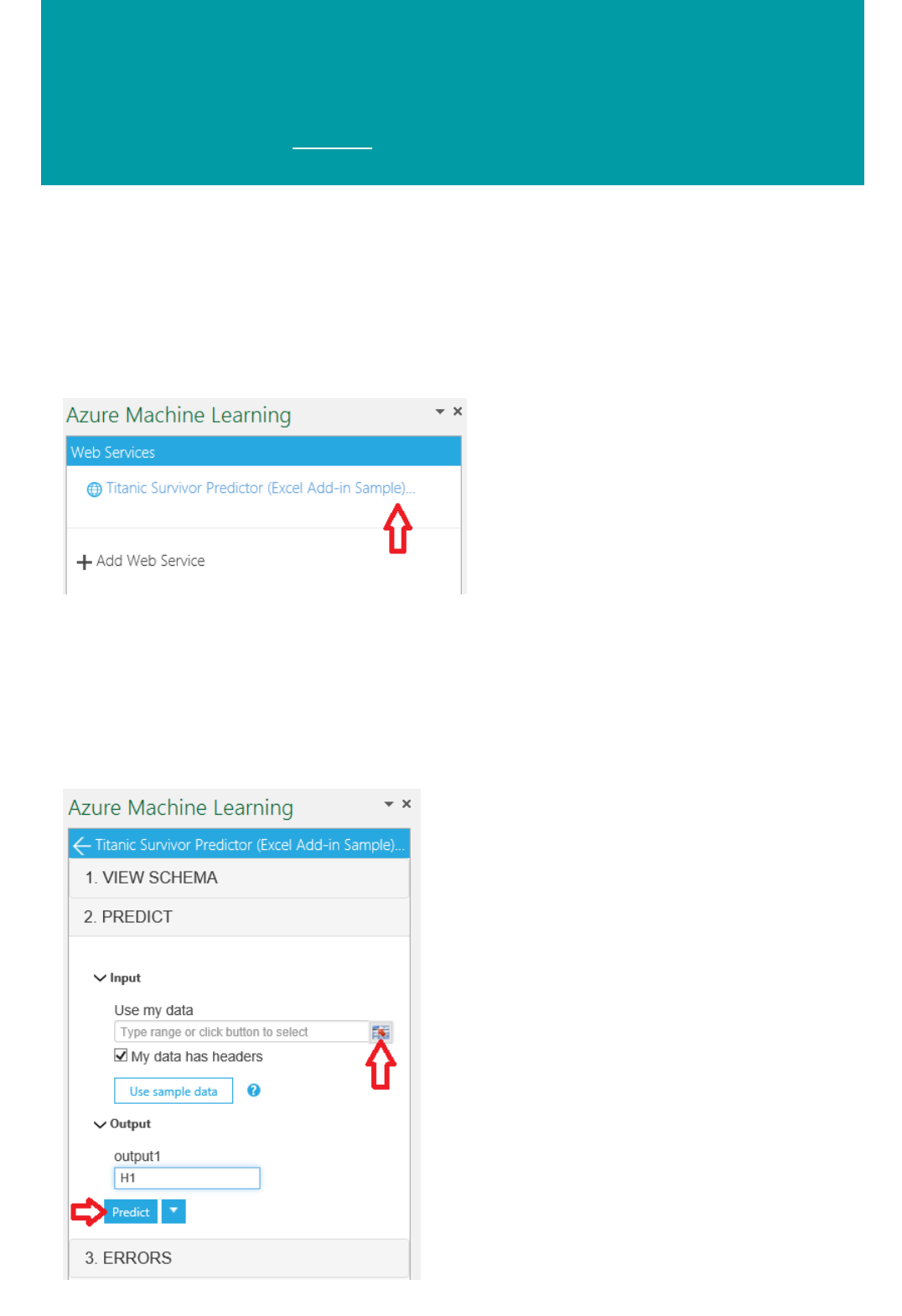

The sample code is available on:

The Consume page for the web service in the Azure Machine Learning Web Services portal

The API Help Page in the web service dashboard in Machine Learning Studio

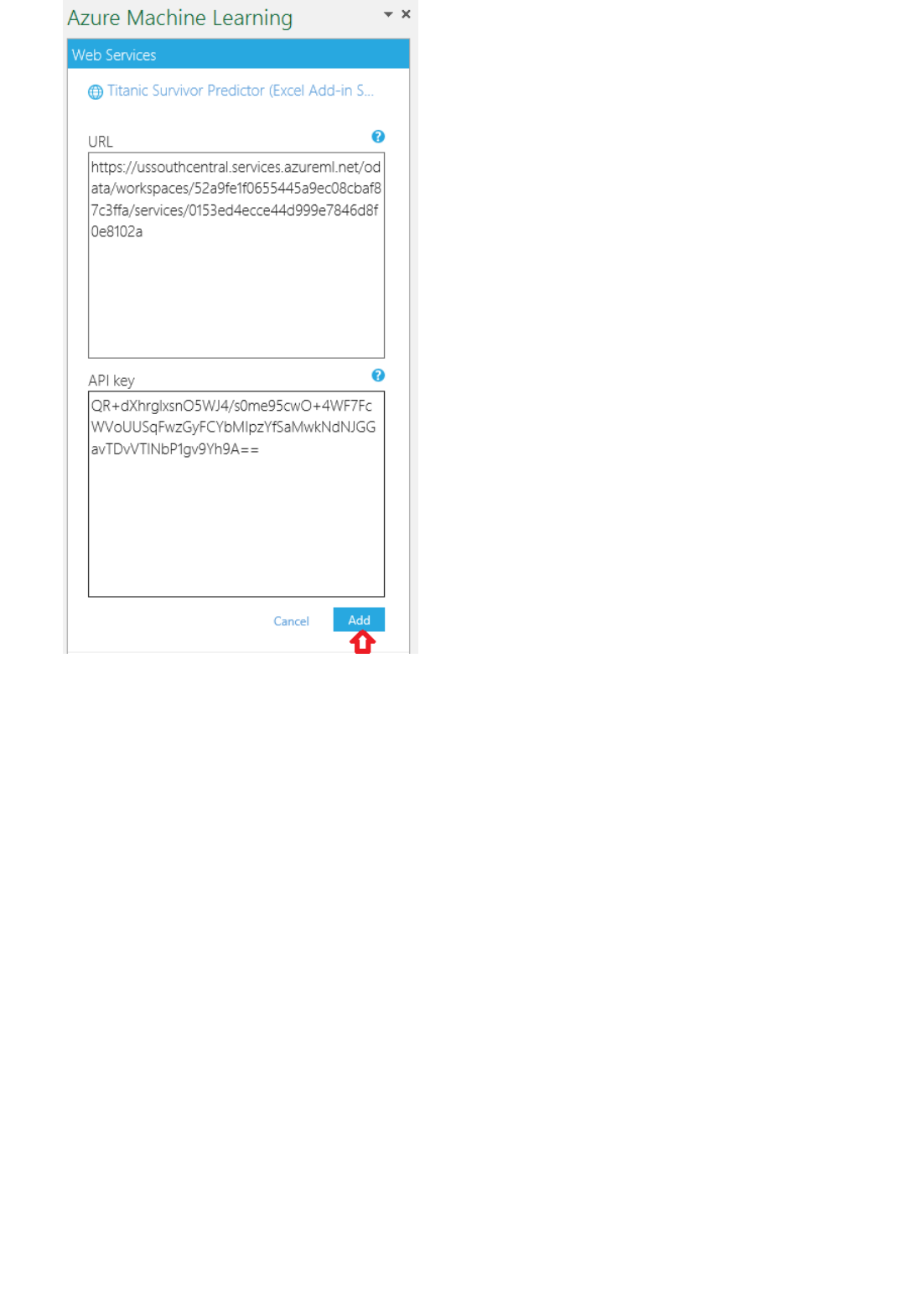

You can also use the sample Microsoft Excel workbook that's created for you and is available in the web service

dashboard in Machine Learning Studio.

What are the main updates to Azure Machine Learning?

For the latest updates, see What's new in Azure Machine Learning.

What data sources does Machine Learning support?

You can download data to a Machine Learning Studio experiment in three ways:

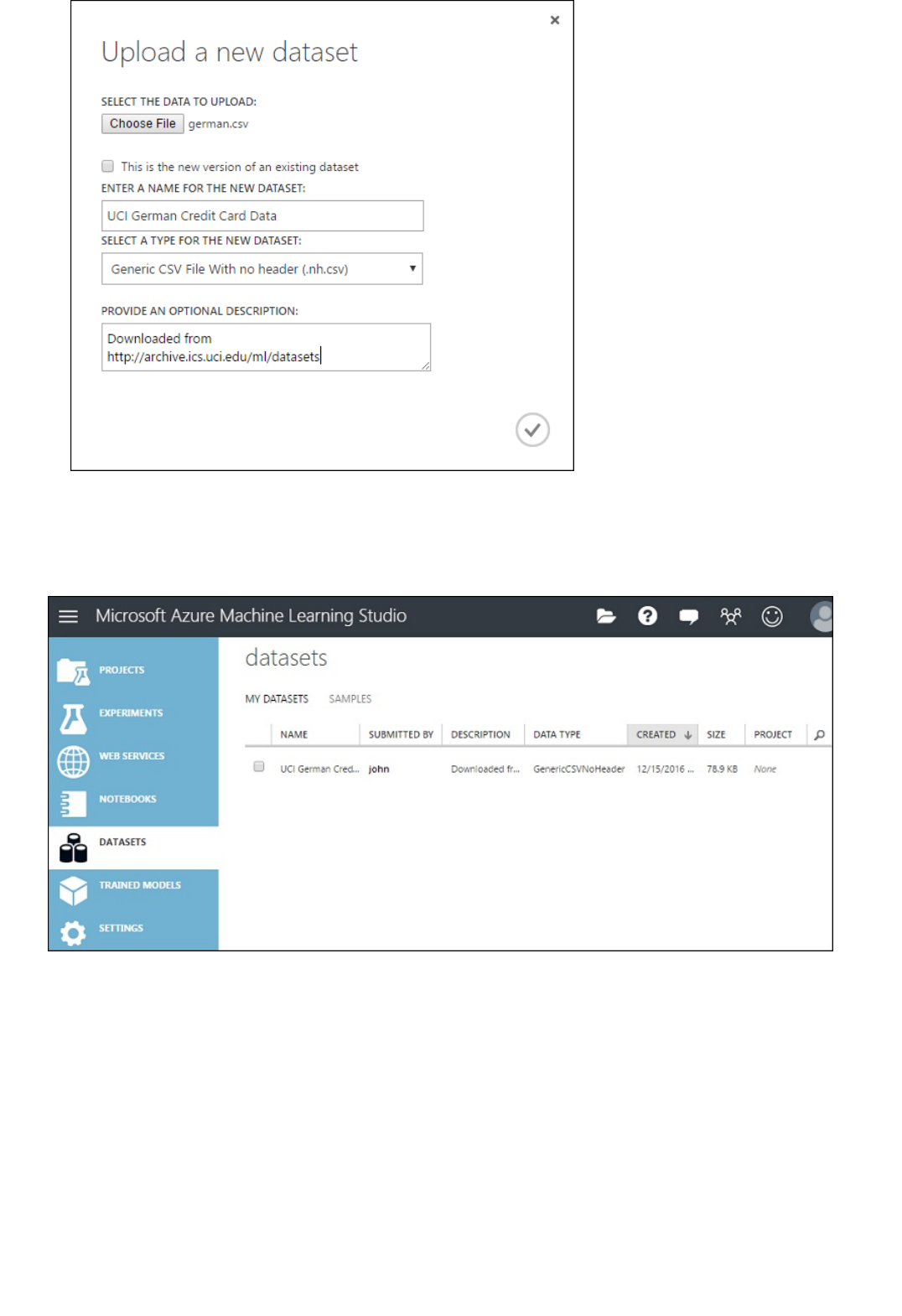

Upload a local file as a dataset

Use a module to import data from cloud data services

Import a dataset saved from another experiment

To learn more about supported file formats, see Import training data into Machine Learning Studio.

Modules in Machine Learning Studio support datasets of up to 10 GB of dense numerical data for common use

cases. If a module takes more than one input, the 10 GB value is the total of all input sizes. You can also sample

larger datasets by using queries from Hive or Azure SQL Database, or you can use Learning by Counts

preprocessing before ingestion.

The following types of data can expand to larger datasets during feature normalization and are limited to less than

10 GB:

Sparse

What are the limits for data upload?What are the limits for data upload?

ModulesModules

Data processingData processing

Categorical

Strings

Binary data

The following modules are limited to datasets less than 10 GB:

Recommender modules

Synthetic Minority Oversampling Technique (SMOTE) module

Scripting modules: R, Python, SQL

Modules where the output data size can be larger than input data size, such as Join or Feature Hashing

Cross-validation, Tune Model Hyperparameters, Ordinal Regression, and One-vs-All Multiclass, when the

number of iterations is very large

For datasets that are larger than a couple GBs, upload data to Azure Storage or Azure SQL Database, or use Azure

HDInsight rather than directly uploading from a local file.

Can I read data from Amazon S3?

If you have a small amount of data and want to expose it via an HTTP URL, then you can use the Import Data

module. For larger amounts of data, transfer it to Azure Storage first, and then use the Import Data module to

bring it into your experiment.

Is there a built-in image input capability?

You can learn about image input capability in the Import Images reference.

The algorithm, data source, data format, or data transformation operation that I am looking for isn't in

Azure Machine Learning Studio. What are my options?

You can go to the user feedback forum to see feature requests that we are tracking. Add your vote to a request if a

capability that you're looking for has already been requested. If the capability that you're looking for doesn't exist,

create a new request. You can view the status of your request in this forum, too. We track this list closely and

update the status of feature availability frequently. In addition, you can use the built-in support for R and Python to

create custom transformations when needed.

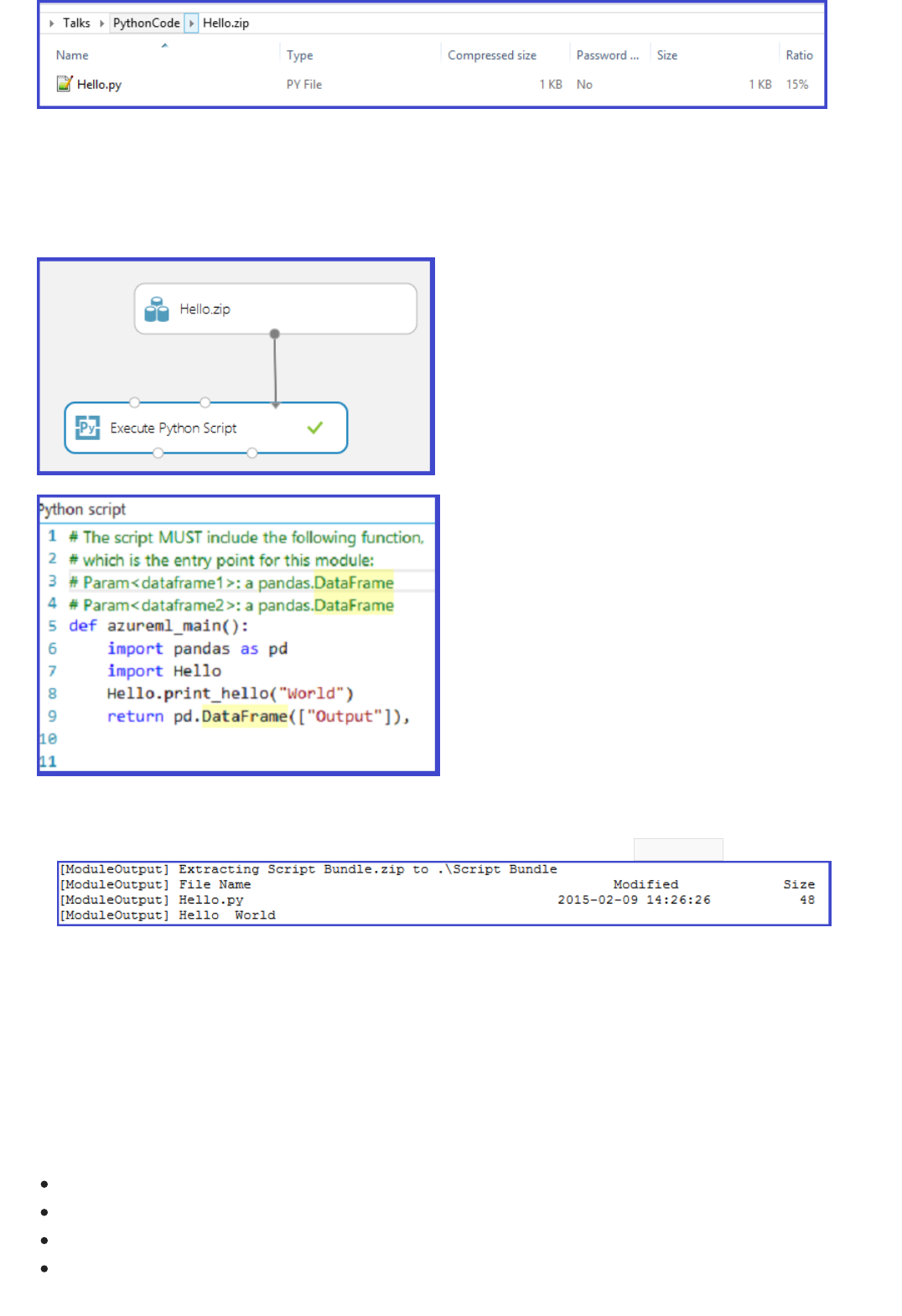

Can I bring my existing code into Machine Learning Studio?

Yes, you can bring your existing R or Python code into Machine Learning Studio, run it in the same experiment with

Azure Machine Learning learners, and deploy the solution as a web service via Azure Machine Learning. For more

information, see Extend your experiment with R and Execute Python machine learning scripts in Azure Machine

Learning Studio.

Is it possible to use something like PMML to define a model?

No, Predictive Model Markup Language (PMML) is not supported. You can use custom R and Python code to

define a module.

How many modules can I execute in parallel in my experiment?

You can execute up to four modules in parallel in an experiment.

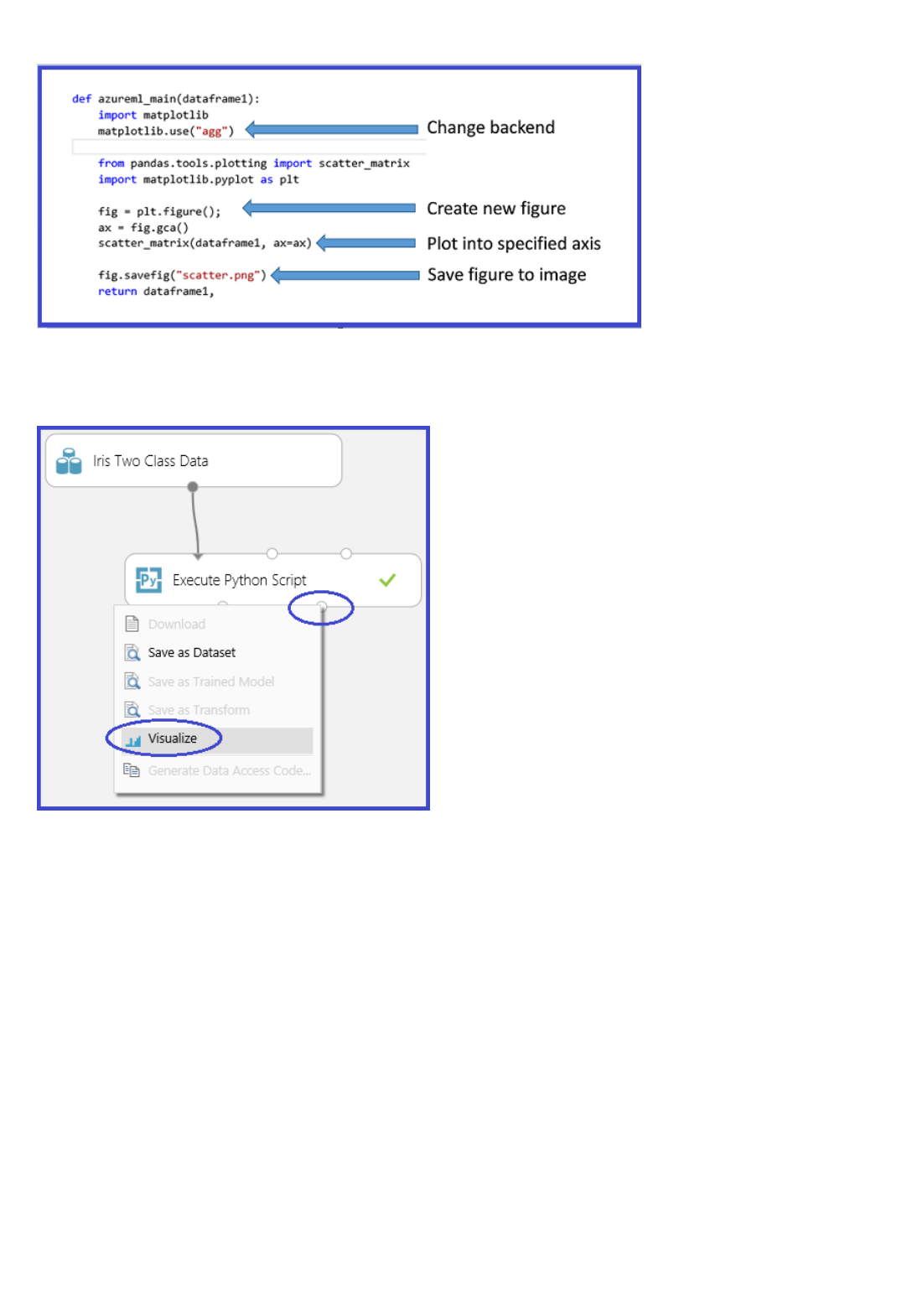

Is there an ability to visualize data (beyond R visualizations) interactively within the experiment?

Click the output of a module to visualize the data and get statistics.

When previewing results or data in a browser, the number of rows and columns is limited. Why?

AlgorithmsAlgorithms

R moduleR module

Python modulePython module

Because large amounts of data might be sent to a browser, data size is limited to prevent slowing down Machine

Learning Studio. To visualize all the data/results, it's better to download the data and use Excel or another tool.

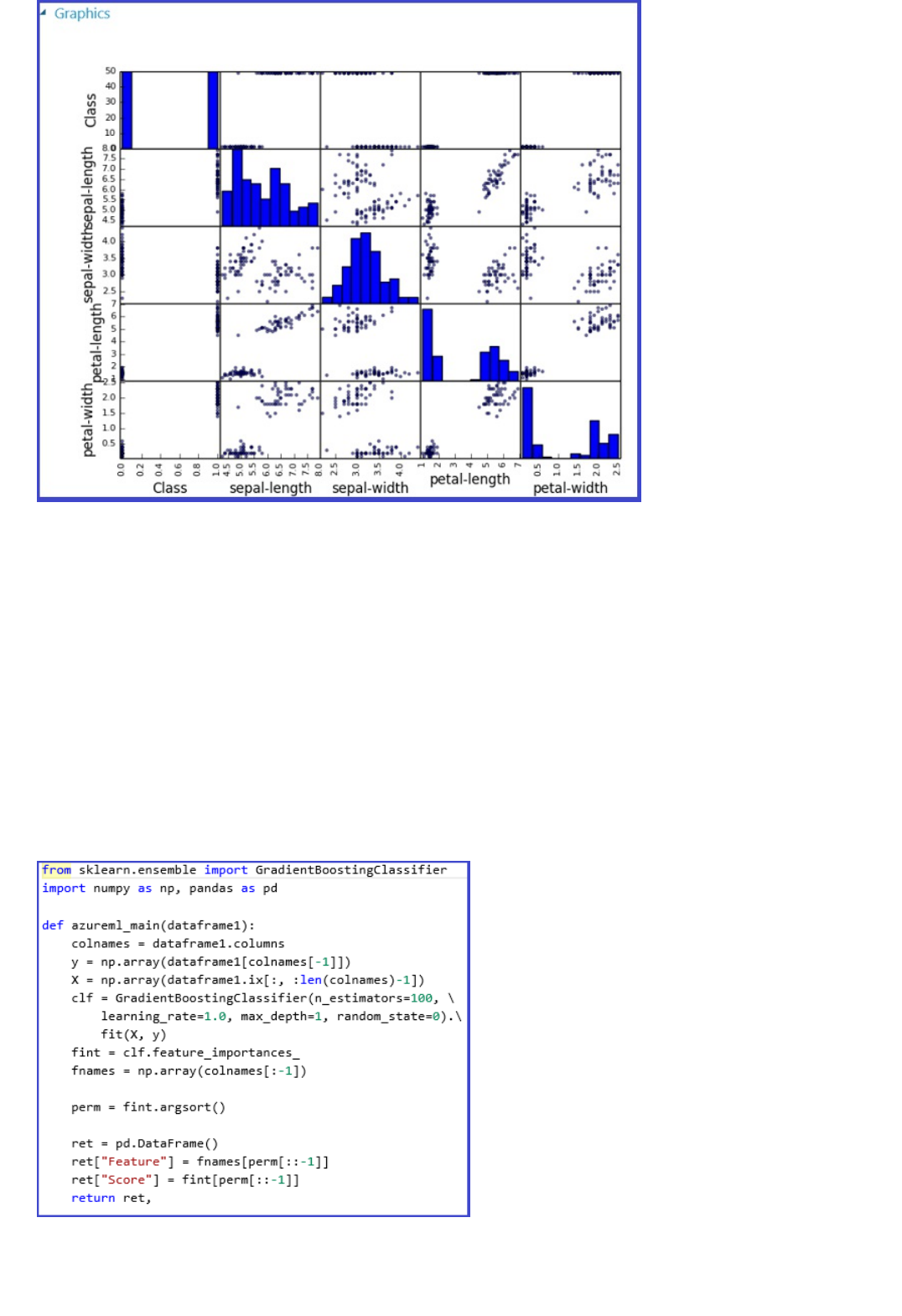

What existing algorithms are supported in Machine Learning Studio?

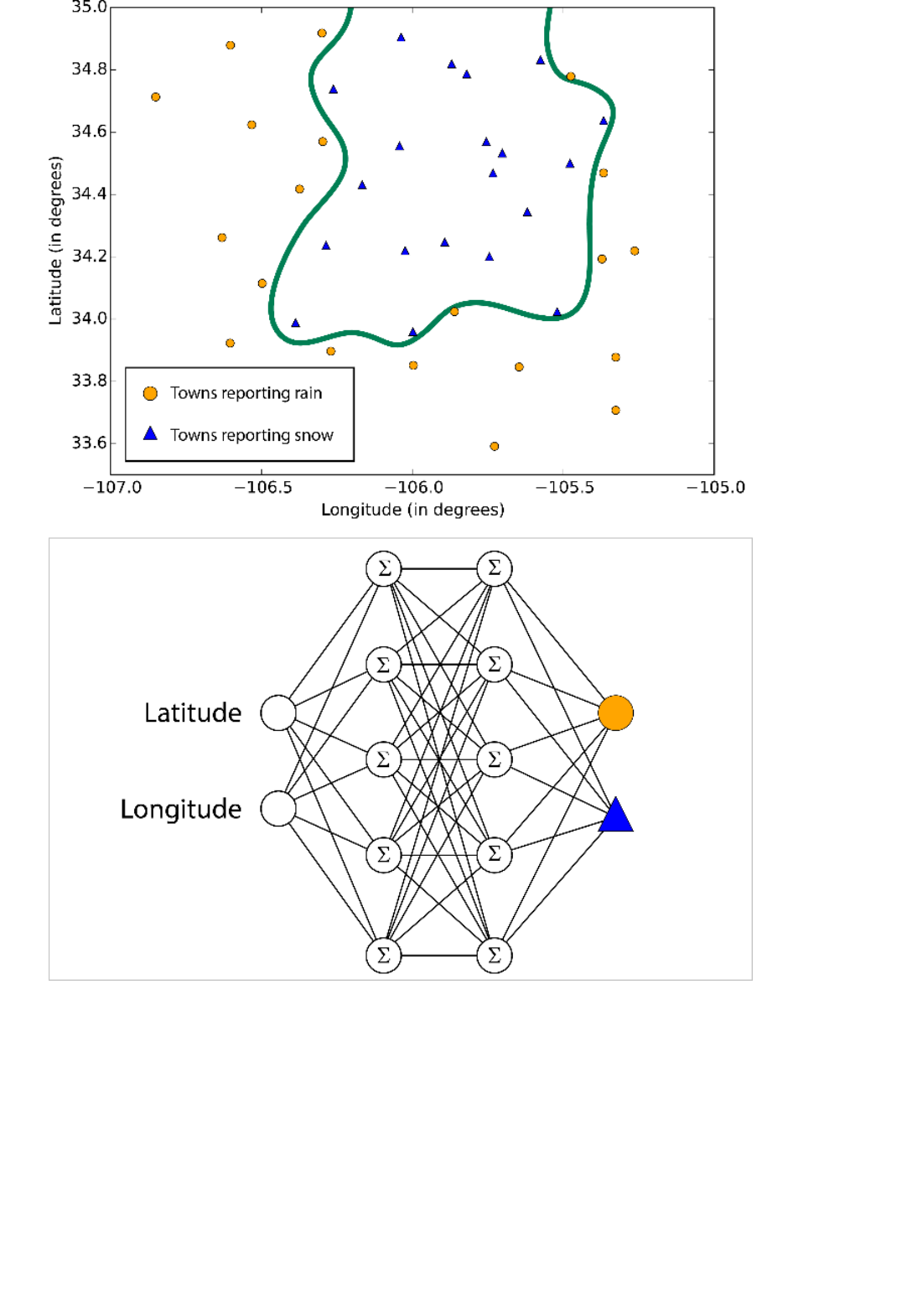

Machine Learning Studio provides state-of-the-art algorithms, such as Scalable Boosted Decision trees, Bayesian

Recommendation systems, Deep Neural Networks, and Decision Jungles developed at Microsoft Research.

Scalable open-source machine learning packages, like Vowpal Wabbit, are also included. Machine Learning Studio

supports machine learning algorithms for multiclass and binary classification, regression, and clustering. See the

complete list of Machine Learning Modules.

Do you automatically suggest the right Machine Learning algorithm to use for my data?

No, but Machine Learning Studio has various ways to compare the results of each algorithm to determine the right

one for your problem.

Do you have any guidelines on picking one algorithm over another for the provided algorithms?

See How to choose an algorithm.

Are the provided algorithms written in R or Python?

No, these algorithms are mostly written in compiled languages to provide better performance.

Are any details of the algorithms provided?

The documentation provides some information about the algorithms and parameters for tuning are described to

optimize the algorithm for your use.

Is there any support for online learning?

No, currently only programmatic retraining is supported.

Can I visualize the layers of a Neural Net Model by using the built-in module?

No.

Can I create my own modules in C# or some other language?

Currently, you can only use R to create new custom modules.

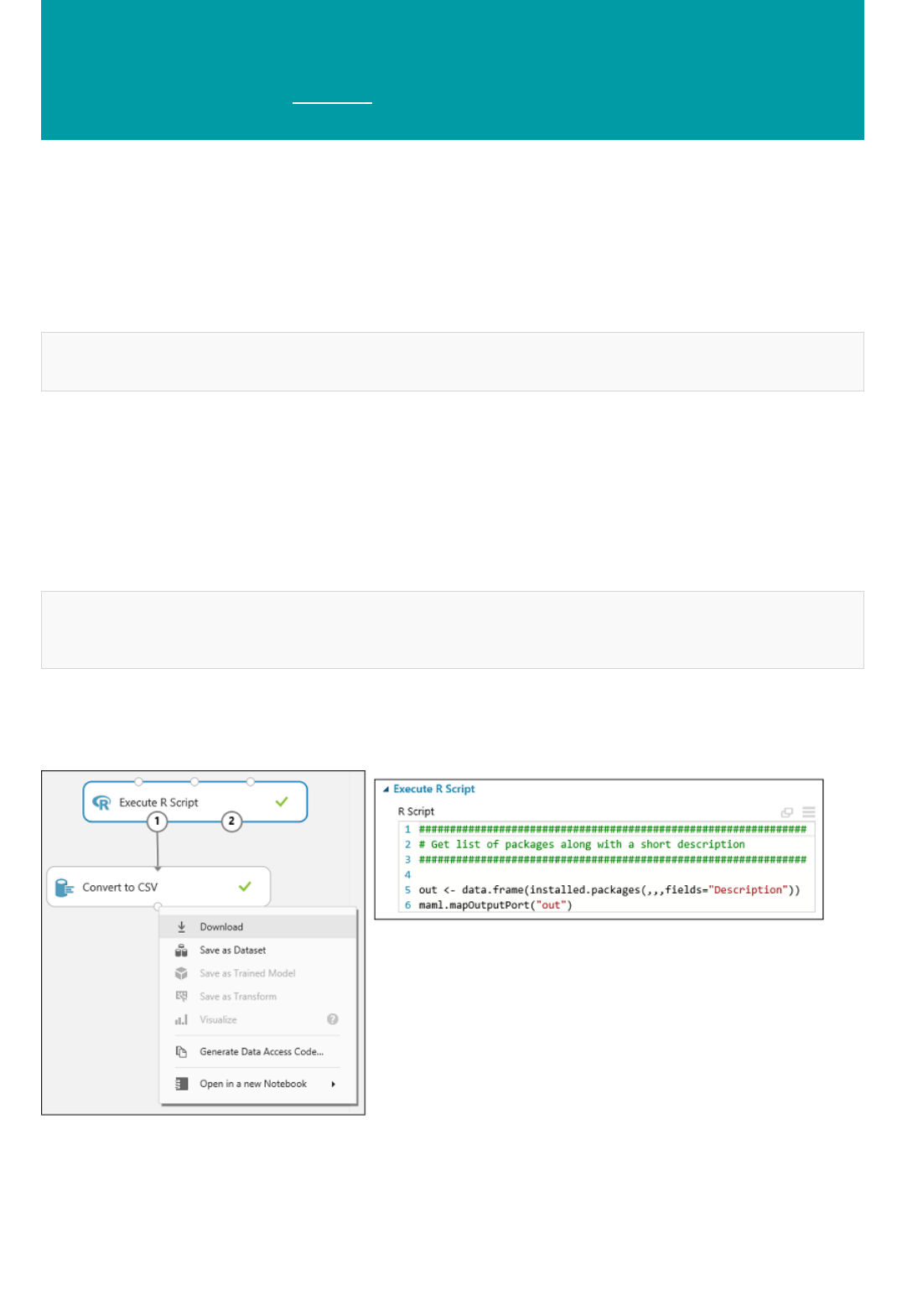

What R packages are available in Machine Learning Studio?

Machine Learning Studio supports more than 400 CRAN R packages today, and here is the current list of all

included packages. Also, see Extend your experiment with R to learn how to retrieve this list yourself. If the package

that you want is not in this list, provide the name of the package at the user feedback forum.

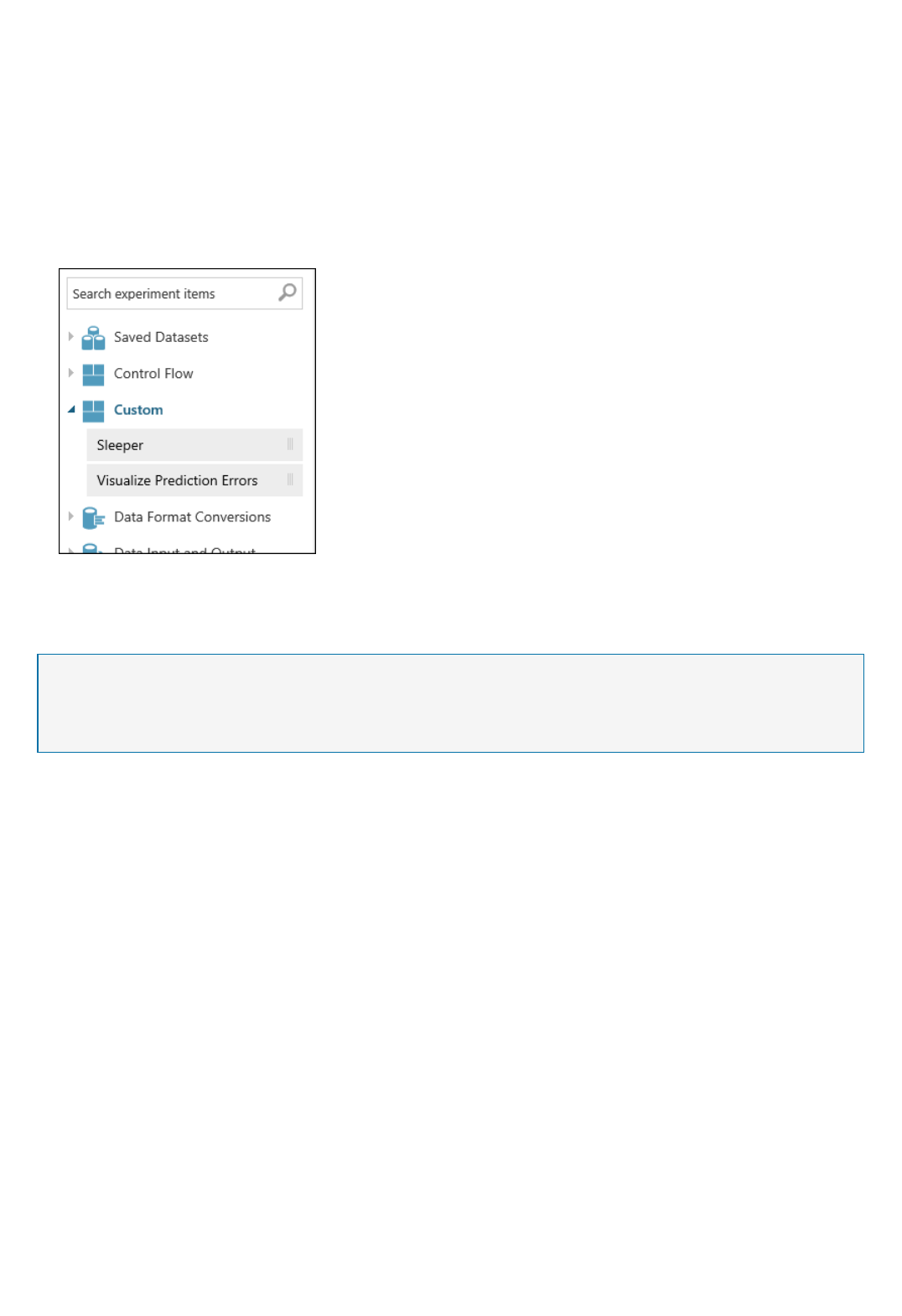

Is it possible to build a custom R module?

Yes, see Author custom R modules in Azure Machine Learning for more information.

Is there a REPL environment for R?

No, there is no Read-Eval-Print-Loop (REPL) environment for R in the studio.

Is it possible to build a custom Python module?

Not currently, but you can use one or more Execute Python Script modules to get the same result.

Web service

RetrainRetrain

CreateCreate

UseUse

Is there a REPL environment for Python?

You can use the Jupyter Notebooks in Machine Learning Studio. For more information, see Introducing Jupyter

Notebooks in Azure Machine Learning Studio.

How do I retrain Azure Machine Learning models programmatically?

Use the retraining APIs. For more information, see Retrain Machine Learning models programmatically. Sample

code is also available in the Microsoft Azure Machine Learning Retraining Demo.

Can I deploy the model locally or in an application that doesn't have an Internet connection?

No.

Is there a baseline latency that is expected for all web services?

See the Azure subscription limits.

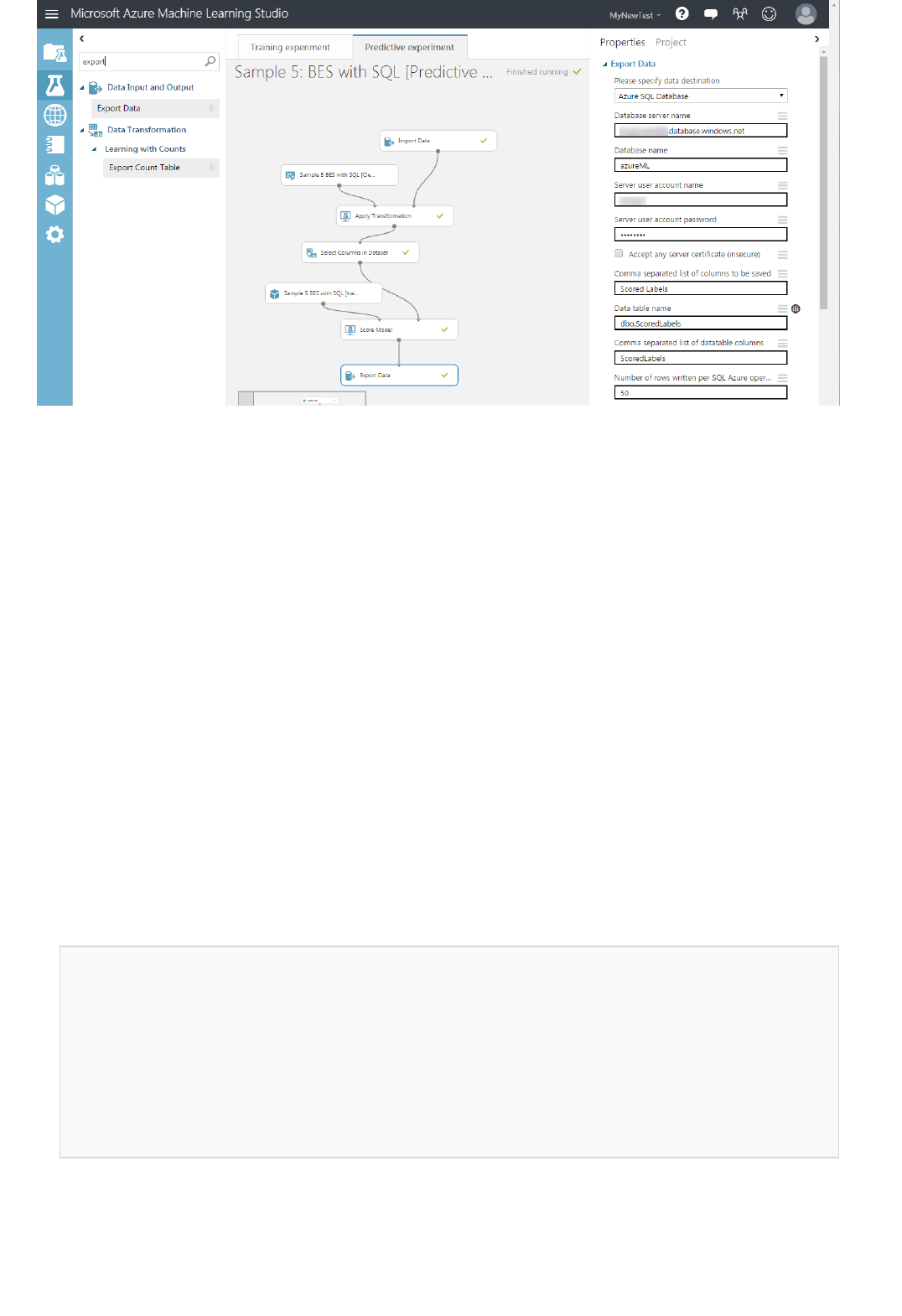

When would I want to run my predictive model as a Batch Execution service versus a Request Response

service?

The Request Response service (RRS) is a low-latency, high-scale web service that is used to provide an interface to

stateless models that are created and deployed from the experimentation environment. The Batch Execution

service (BES) is a service that asynchronously scores a batch of data records. The input for BES is like data input

that RRS uses. The main difference is that BES reads a block of records from a variety of sources, such as Azure

Blob storage, Azure Table storage, Azure SQL Database, HDInsight (hive query), and HTTP sources. For more

information, see How to consume an Azure Machine Learning Web service.

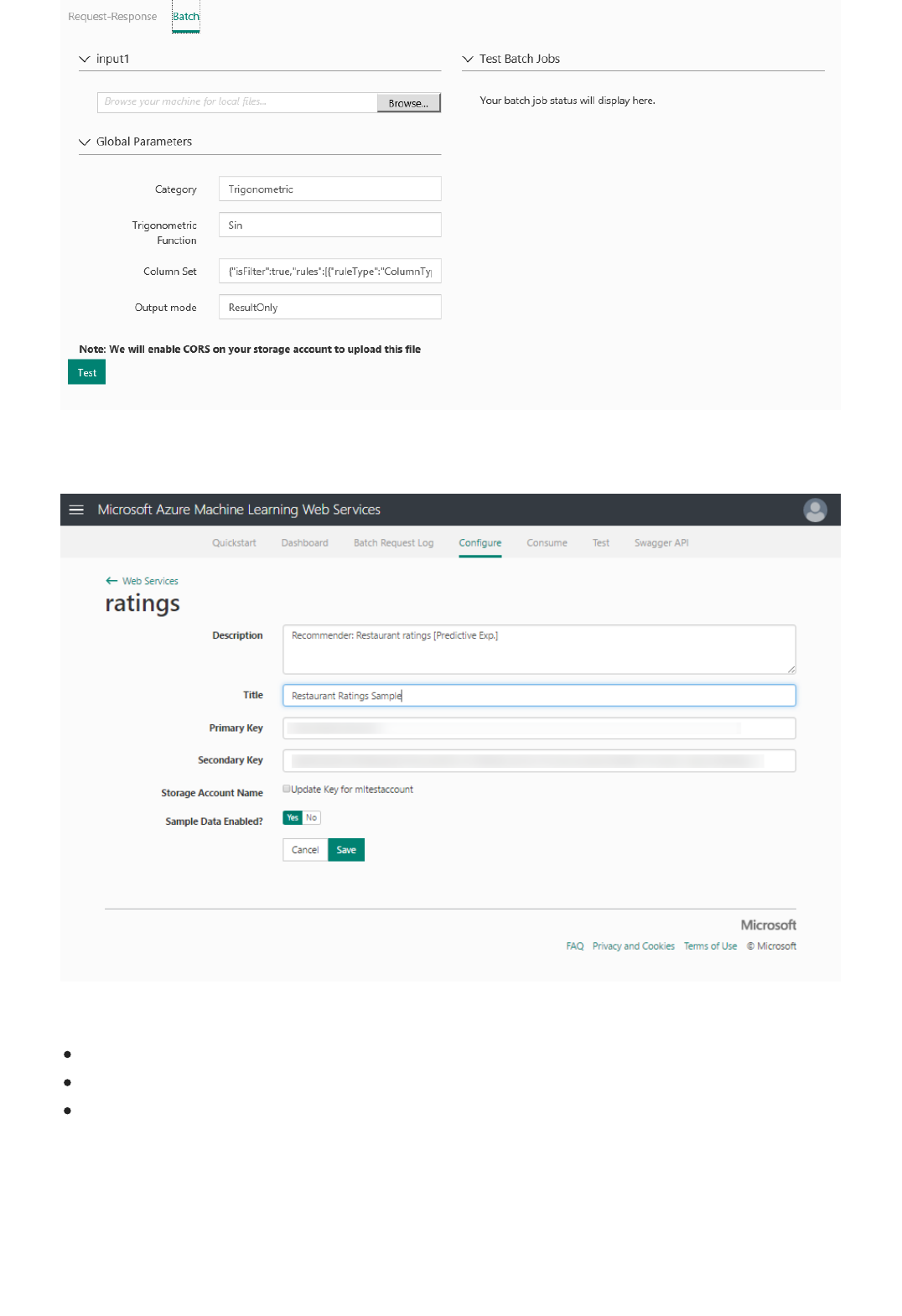

How do I update the model for the deployed web service?

To update a predictive model for an already deployed service, modify and rerun the experiment that you used to

author and save the trained model. After you have a new version of the trained model available, Machine Learning

Studio asks you if you want to update your web service. For details about how to update a deployed web service,

see Deploy a Machine Learning web service.

You can also use the Retraining APIs. For more information, see Retrain Machine Learning models

programmatically. Sample code is also available in the Microsoft Azure Machine Learning Retraining Demo.

How do I monitor my web service deployed in production?

After you deploy a predictive model, you can monitor it from the Azure Machine Learning Web Services portal.

Each deployed service has its own dashboard where you can see monitoring information for that service. For more

information about how to manage your deployed web services, see Manage a Web service using the Azure

Machine Learning Web Services portal and Manage an Azure Machine Learning workspace.

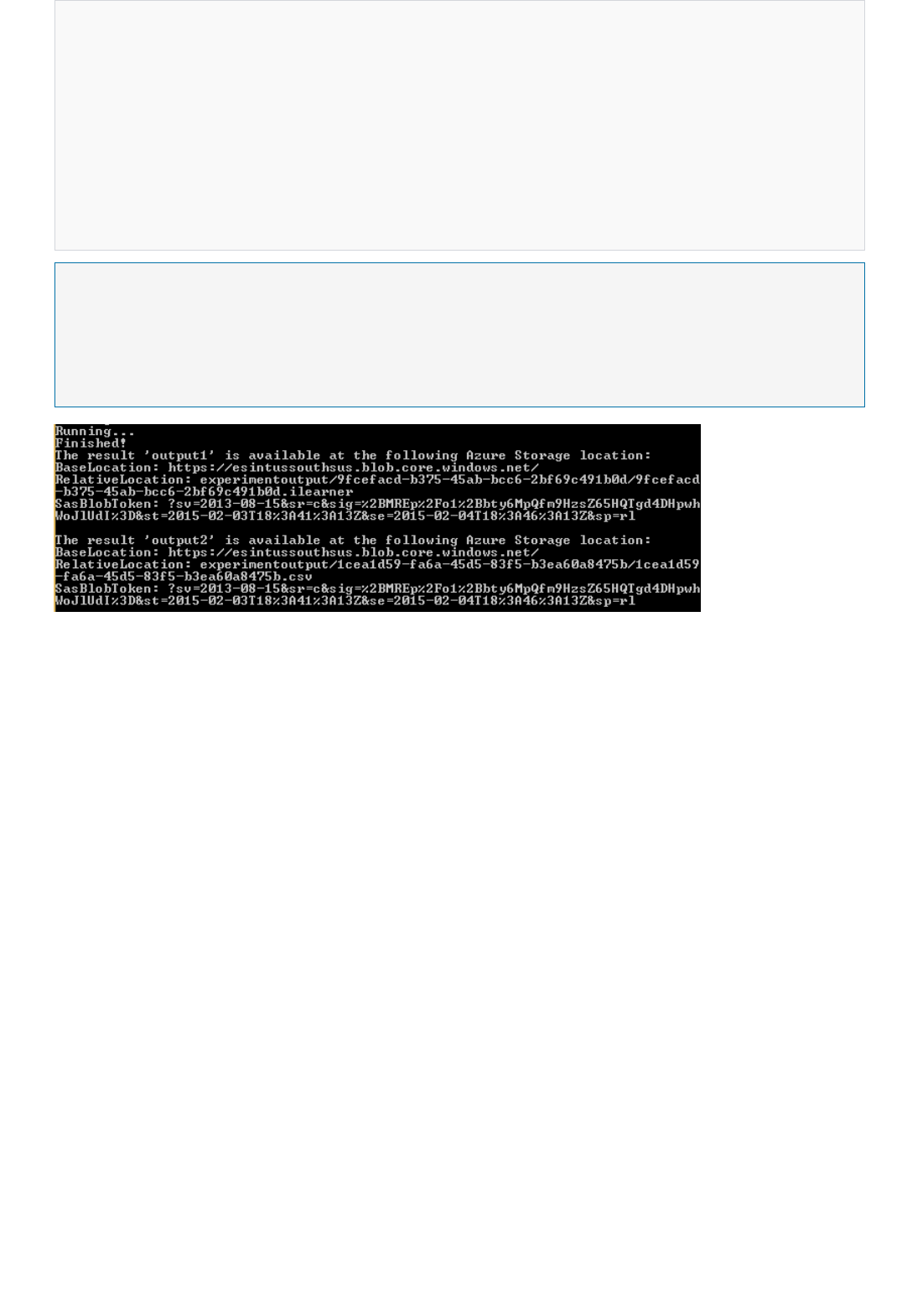

Is there a place where I can see the output of my RRS/BES?

For RRS, the web service response is typically where you see the result. You can also write it to Azure Blob storage.

For BES, the output is written to a blob by default. You can also write the output to a database or table by using the

Export Data module.

Can I create web services only from models that were created in Machine Learning Studio?

No, you can also create web services directly by using Jupyter Notebooks and RStudio.

Scalability

Security and availability

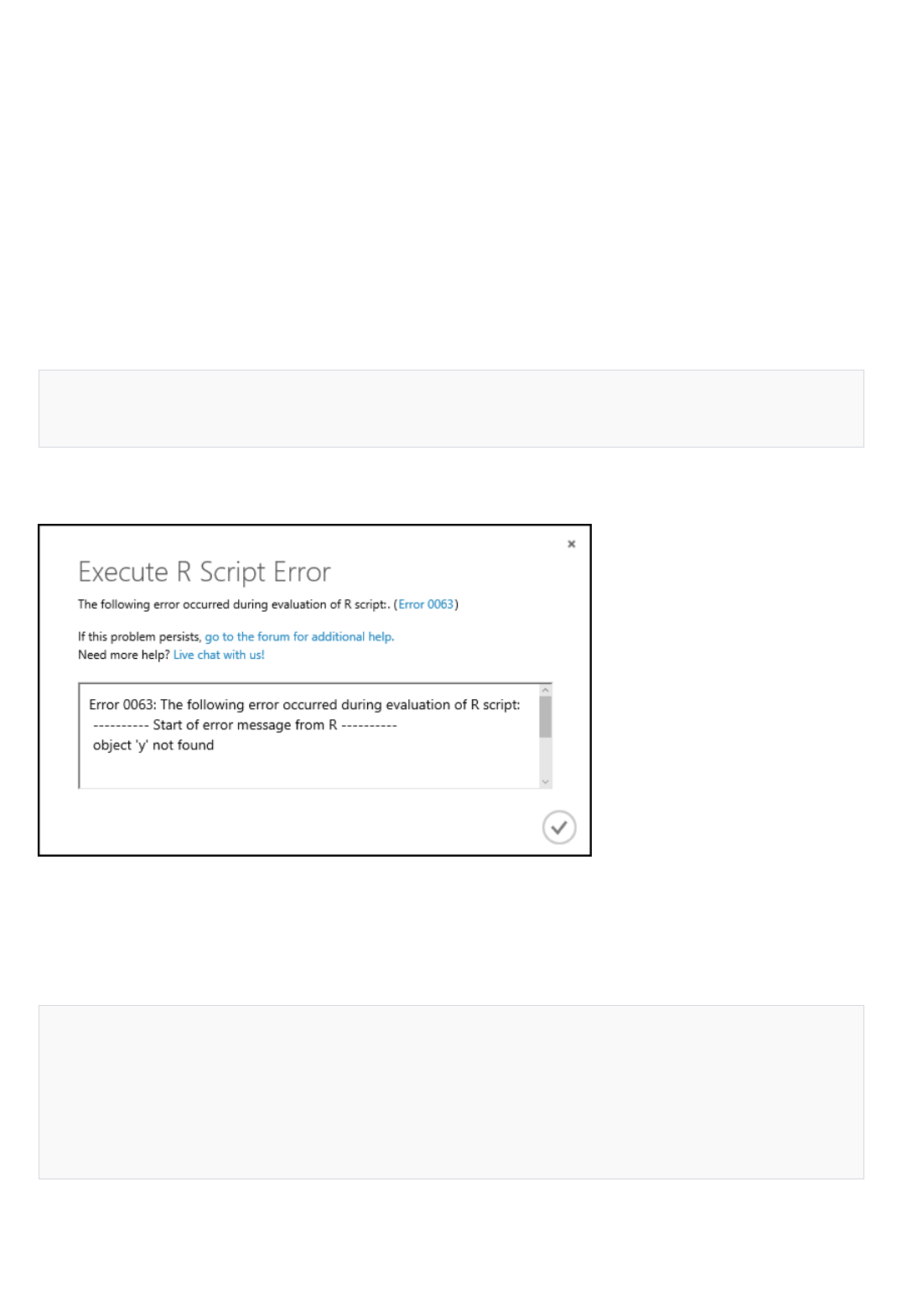

Where can I find information about error codes?

See Machine Learning Module Error Codes for a list of error codes and descriptions.

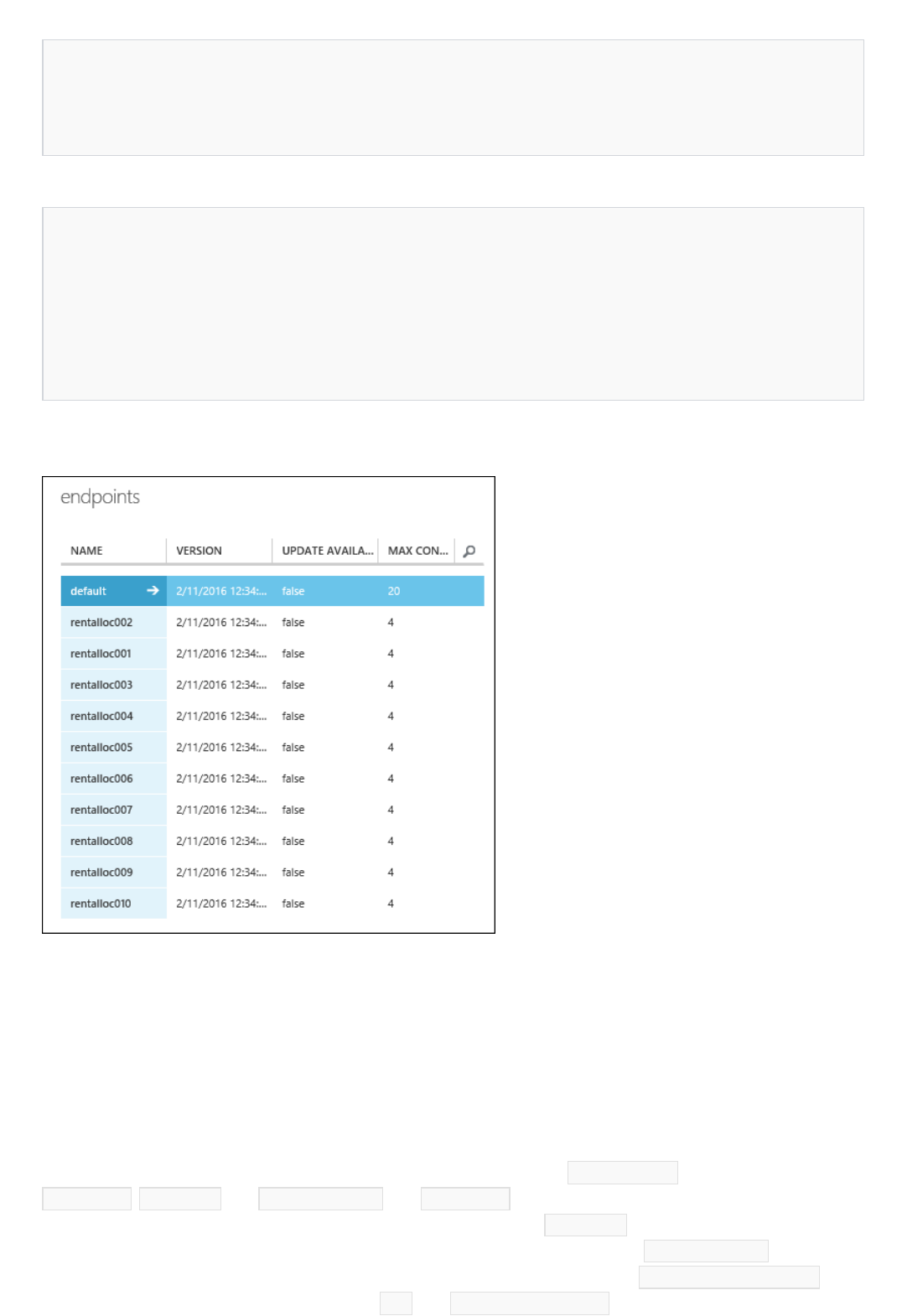

What is the scalability of the web service?

Currently, the default endpoint is provisioned with 20 concurrent RRS requests per endpoint. You can scale this to

200 concurrent requests per endpoint, and you can scale each web service to 10,000 endpoints per web service as

described in Scaling a Web Service. For BES, each endpoint can process 40 requests at a time, and additional

requests beyond 40 requests are queued. These queued requests run automatically as the queue drains.

Are R jobs spread across nodes?

No.

How much data can I use for training?

Modules in Machine Learning Studio support datasets of up to 10 GB of dense numerical data for common use

cases. If a module takes more than one input, the total size for all inputs is 10 GB. You can also sample larger

datasets via Hive queries, via Azure SQL Database queries, or by preprocessing with Learning with Counts

modules before ingestion.

The following types of data can expand to larger datasets during feature normalization and are limited to less than

10 GB:

Sparse

Categorical

Strings

Binary data

The following modules are limited to datasets less than 10 GB:

Recommender modules

Synthetic Minority Oversampling Technique (SMOTE) module

Scripting modules: R, Python, SQL

Modules where the output data size can be larger than input data size, such as Join or Feature Hashing

Cross-Validate, Tune Model Hyperparameters, Ordinal Regression, and One-vs-All Multiclass, when number of

iterations is very large

For datasets that are larger than a few GBs, upload data to Azure Storage or Azure SQL Database, or use

HDInsight rather than directly uploading from a local file.

Are there any vector size limitations?

Rows and columns are each limited to the .NET limitation of Max Int: 2,147,483,647.

Can I adjust the size of the virtual machine that runs the web service?

No.

Who can access the http endpoint for the web service by default? How do I restrict access to the

endpoint?

After a web service is deployed, a default endpoint is created for that service. The default endpoint can be called by

Support and training

Billing questions

using its API key. You can add more endpoints with their own keys from the Web Services portal or

programmatically by using the Web Service Management APIs. Access keys are needed to make calls to the web

service. For more information, see How to consume an Azure Machine Learning Web service.

What happens if my Azure storage account can't be found?

Machine Learning Studio relies on a user-supplied Azure storage account to save intermediary data when it

executes the workflow. This storage account is provided to Machine Learning Studio when a workspace is created.

After the workspace is created, if the storage account is deleted and can no longer be found, the workspace will

stop functioning, and all experiments in that workspace will fail.

If you accidentally deleted the storage account, recreate the storage account with the same name in the same

region as the deleted storage account. After that, resync the access key.

What happens if my storage account access key is out of sync?

Machine Learning Studio relies on a user-supplied Azure storage account to store intermediary data when it

executes the workflow. This storage account is provided to Machine Learning Studio when a workspace is created,

and the access keys are associated with that workspace. If the access keys are changed after the workspace is

created, the workspace can no longer access the storage account. It will stop functioning and all experiments in that

workspace will fail.

If you changed storage account access keys, resync the access keys in the workspace by using the Azure portal.

Where can I get training for Azure Machine Learning?

The Azure Machine Learning Documentation Center hosts video tutorials and how-to guides. These step-by-step

guides introduce the services and explain the data science life cycle of importing data, cleaning data, building

predictive models, and deploying them in production by using Azure Machine Learning.

We add new material to the Machine Learning Center on an ongoing basis. You can submit requests for additional

learning material on Machine Learning Center at the user feedback forum.

You can also find training at Microsoft Virtual Academy.

How do I get support for Azure Machine Learning?

To get technical support for Azure Machine Learning, go to Azure Support, and select Machine Learning.

Azure Machine Learning also has a community forum on MSDN where you can ask questions about Azure

Machine Learning. The Azure Machine Learning team monitors the forum. Go to Azure Forum.

How does Machine Learning billing work?

Azure Machine Learning has two components: Machine Learning Studio and Machine Learning web services.

While you are evaluating Machine Learning Studio, you can use the Free billing tier. The Free tier also lets you

deploy a Classic web service that has limited capacity.

If you decide that Azure Machine Learning meets your needs, you can sign up for the Standard tier. To sign up, you

must have a Microsoft Azure subscription.

In the Standard tier, you are billed monthly for each workspace that you define in Machine Learning Studio. When

you run an experiment in the studio, you are billed for compute resources when you are running an experiment.

When you deploy a Classic web service, transactions and compute hours are billed on the Pay As You Go basis.

NOTENOTE

New (Resource Manager-based) web services introduce billing plans that allow for more predictability in costs.

Tiered pricing offers discounted rates to customers who need a large amount of capacity.

When you create a plan, you commit to a fixed cost that comes with an included quantity of API compute hours

and API transactions. If you need more included quantities, you can add instances to your plan. If you need a lot

more included quantities, you can choose a higher tier plan that provides considerably more included quantities

and a better discounted rate.

After the included quantities in existing instances are used up, additional usage is charged at the overage rate that's

associated with the billing plan tier.

Included quantities are reallocated every 30 days, and unused included quantities do not roll over to the next period.

For additional billing and pricing information, see Machine Learning Pricing.

Does Machine Learning have a free trial?

Azure Machine Learning has a free subscription option that's explained in Machine Learning Pricing. Machine

Learning Studio has an eight-hour quick evaluation trial that's available when you sign in to Machine Learning

Studio.

In addition, when you sign up for an Azure free trial, you can try any Azure services for a month. To learn more

about the Azure free trial, visit Azure free trial FAQ.

What is a transaction?

A transaction represents an API call that Azure Machine Learning responds to. Transactions from Request-

Response Service (RRS) and Batch Execution Service (BES) calls are aggregated and charged against your billing

plan.

Can I use the included transaction quantities in a plan for both RRS and BES transactions?

Yes, your transactions from your RRS and BES are aggregated and charged against your billing plan.

What is an API compute hour?

An API compute hour is the billing unit for the time that API calls take to run by using Machine Learning compute

resources. All your calls are aggregated for billing purposes.

How long does a typical production API call take?

Production API call times can vary significantly, generally ranging from hundreds of milliseconds to a few seconds.

Some API calls might require minutes depending on the complexity of the data processing and machine-learning

model. The best way to estimate production API call times is to benchmark a model on the Machine Learning

service.

What is a Studio compute hour?

A Studio compute hour is the billing unit for the aggregate time that your experiments use compute resources in

studio.

In New (Azure Resource Manager-based) web services, what is the Dev/Test tier meant for?

Resource Manager-based web services provide multiple tiers that you can use to provision your billing plan. The

Dev/Test pricing tier provides limited, included quantities that allow you to test your experiment as a web service

without incurring costs. You have the opportunity to see how it works.

Are there separate storage charges?

Management of New (Resource Manager-based) web servicesManagement of New (Resource Manager-based) web services

NOTENOTE

NOTENOTE

NOTENOTE

Sign up for New (Resource Manager-based) web services plansSign up for New (Resource Manager-based) web services plans

The Machine Learning Free tier does not require or allow separate storage. The Machine Learning Standard tier

requires users to have an Azure storage account. Azure Storage is billed separately.

Does Machine Learning support high availability?

Yes. For details, see Machine Learning Pricing for a description of the service level agreement (SL A).

What specific kind of compute resources will my production API calls be run on?

The Machine Learning service is a multitenant service. Actual compute resources that are used on the back end

vary and are optimized for performance and predictability.

What happens if I delete my plan?

The plan is removed from your subscription, and you are billed for prorated usage.

You cannot delete a plan that a web service is using. To delete the plan, you must either assign a new plan to the web service

or delete the web service.

What is a plan instance?

A plan instance is a unit of included quantities that you can add to your billing plan. When you select a billing tier

for your billing plan, it comes with one instance. If you need more included quantities, you can add instances of the

selected billing tier to your plan.

How many plan instances can I add?

You can have one instance of the Dev/Test pricing tier in a subscription.

For Standard S1, Standard S2, and Standard S3 tiers, you can add as many as necessary.

Depending on your anticipated usage, it might be more cost effective to upgrade to a tier that has more included quantities

rather than add instances to the current tier.

What happens when I change plan tiers (upgrade / downgrade)?

The old plan is deleted and the current usage is billed on a prorated basis. A new plan with the full included

quantities of the upgraded/downgraded tier is created for the rest of the period.

Included quantities are allocated per period, and unused quantities do not roll over.

What happens when I increase the instances in a plan?

Quantities are included on a prorated basis and may take 24 hours to be effective.

What happens when I delete an instance of a plan?

The instance is removed from your subscription, and you are billed for prorated usage.

How do I sign up for a plan?

New web services: OveragesNew web services: Overages

You have two ways to create billing plans.

When you first deploy a Resource Manager-based web service, you can choose an existing plan or create a new

plan.

Plans that you create in this manner are in your default region, and your web service will be deployed to that

region.

If you want to deploy services to regions other than your default region, you may want to define your billing plans

before you deploy your service.

In that case, you can sign in to the Azure Machine Learning Web Services portal, and go to the Plans page. From

there, you can add plans, delete plans, and modify existing plans.

Which plan should I choose to start off with?

We recommend you that you start with the Standard S1 tier and monitor your service for usage. If you find that

you are using your included quantities rapidly, you can add instances or move to a higher tier and get better

discounted rates. You can adjust your billing plan as needed throughout your billing cycle.

Which regions are the new plans available in?

The new billing plans are available in the three production regions in which we support the new web services:

South Central US

West Europe

South East Asia

I have web services in multiple regions. Do I need a plan for every region?

Yes. Plan pricing varies by region. When you deploy a web service to another region, you need to assign it a plan

that is specific to that region. For more information, see Products available by region.

How do I check if I exceeded my web service usage?

You can view the usage on all your plans on the Plans page in the Azure Machine Learning Web Services portal.

Sign in to the portal, and then click the Plans menu option.

In the Transactions and Compute columns of the table, you can see the included quantities of the plan and the

percentage used.

What happens when I use up the include quantities in the Dev/Test pricing tier?

Services that have a Dev/Test pricing tier assigned to them are stopped until the next period or until you move

them to a paid tier.

For Classic web services and overages of New (Resource Manager-based) web services, how are prices

calculated for Request Response (RRS) and Batch (BES) workloads?

For an RRS workload, you are charged for every API transaction call that you make and for the compute time

that's associated with those requests. Your RRS production API transaction costs are calculated as the total number

of API calls that you make multiplied by the price per 1,000 transactions (prorated by individual transaction). Your

RRS API production API compute hour costs are calculated as the amount of time required for each API call to run,

multiplied by the total number of API transactions, multiplied by the price per production API compute hour.

For example, for Standard S1 overage, 1,000,000 API transactions that take 0.72 seconds each to run would result

in (1,000,000 * $0.50/1K API transactions) in $500 in production API transaction costs and (1,000,000 * 0.72 sec *

$2/hr) $400 in production API compute hours, for a total of $900.

Azure Machine Learning Classic web servicesAzure Machine Learning Classic web services

Azure Machine Learning Free and Standard tierAzure Machine Learning Free and Standard tier

For a BES workload, you are charged in the same manner. However, the API transaction costs represent the

number of batch jobs that you submit, and the compute costs represent the compute time that's associated with

those batch jobs. Your BES production API transaction costs are calculated as the total number of jobs submitted

multiplied by the price per 1,000 transactions (prorated by individual transaction). Your BES API production API

compute hour costs are calculated as the amount of time required for each row in your job to run multiplied by the

total number of rows in your job multiplied by the total number of jobs multiplied by the price per production API

compute hour. When you use the Machine Learning calculator, the transaction meter represents the number of jobs

that you plan to submit, and the time-per-transaction field represents the combined time that's needed for all rows

in each job to run.

For example, assume Standard S1 overage, and you submit 100 jobs per day that each consist of 500 rows that

take 0.72 seconds each. Your monthly overage costs would be (100 jobs per day = 3,100 jobs/mo * $0.50/1K API

transactions) $1.55 in production API transaction costs and (500 rows * 0.72 sec * 3,100 Jobs * $2/hr) $620 in

production API compute hours, for a total of $621.55.

Is Pay As You Go still available?

Yes, Classic web services are still available in Azure Machine Learning.

What is included in the Azure Machine Learning Free tier?

The Azure Machine Learning Free tier is intended to provide an in-depth introduction to the Azure Machine

Learning Studio. All you need is a Microsoft account to sign up. The Free tier includes free access to one Azure

Machine Learning Studio workspace per Microsoft account. In this tier, you can use up to 10 GB of storage and

operationalize models as staging APIs. Free tier workloads are not covered by an SL A and are intended for

development and personal use only.

Free tier workspaces have the following limitations:

Workloads can't access data by connecting to an on-premises server that runs SQL Server.

You cannot deploy New Resource Manager base web services.

What is included in the Azure Machine Learning Standard tier and plans?

The Azure Machine Learning Standard tier is a paid production version of Azure Machine Learning Studio. The

Azure Machine Learning Studio monthly fee is billed on a per workspace per month basis and prorated for partial

months. Azure Machine Learning Studio experiment hours are billed per compute hour for active experimentation.

Billing is prorated for partial hours.

The Azure Machine Learning API service is billed depending on whether it's a Classic web service or a New

(Resource Manager-based) web service.

The following charges are aggregated per workspace for your subscription.

Machine Learning Workspace Subscription: The Machine Learning workspace subscription is a monthly fee that

provides access to a Machine Learning Studio workspace. The subscription is required to run experiments in the

studio and to utilize the production APIs.

Studio Experiment hours: This meter aggregates all compute charges that are accrued by running experiments

in Machine Learning Studio and running production API calls in the staging environment.

Access data by connecting to an on-premises server that runs SQL Server in your models for your training and

scoring.

For Classic web services:

Production API Compute Hours: This meter includes compute charges that are accrued by web services

running in production.

Production API Transactions (in 1000s): This meter includes charges that are accrued per call to your

production web service.

Apart from the preceding charges, in the case of Resource Manager-based web service, charges are aggregated to

the selected plan:

Standard S1/S2/S3 API Plan (Units): This meter represents the type of instance that's selected for Resource

Manager-based web services.

Standard S1/S2/S3 Overage API Compute Hours: This meter includes compute charges that are accrued by

Resource Manager-based web services that run in production after the included quantities in existing instances

are used up. The additional usage is charged at the overate rate that's associated with S1/S2/S3 plan tier.

Standard S1/S2/S3 Overage API Transactions (in 1,000s): This meter includes charges that are accrued per call

to your production Resource Manager-based web service after the included quantities in existing instances are

used up. The additional usage is charged at the overate rate associated with S1/S2/S3 plan tier.

Included Quantity API Compute Hours: With Resource Manager-based web services, this meter represents the

included quantity of API compute hours.

Included Quantity API Transactions (in 1,000s): With Resource Manager-based web services, this meter

represents the included quantity of API transactions.

How do I sign up for Azure Machine Learning Free tier?

All you need is a Microsoft account. Go to Azure Machine Learning home, and then click Start Now. Sign in with

your Microsoft account and a workspace in Free tier is created for you. You can start to explore and create Machine

Learning experiments right away.

How do I sign up for Azure Machine Learning Standard tier?

You must first have access to an Azure subscription to create a Standard Machine Learning workspace. You can

sign up for a 30-day free trial Azure subscription and later upgrade to a paid Azure subscription, or you can

purchase a paid Azure subscription outright. You can then create a Machine Learning workspace from the

Microsoft Azure portal after you gain access to the subscription. View the step-by-step instructions.

Alternatively, you can be invited by a Standard Machine Learning workspace owner to access the owner's

workspace.

Can I specify my own Azure Blob storage account to use with the Free tier?

No, the Standard tier is equivalent to the version of the Machine Learning service that was available before the

tiers were introduced.

Can I deploy my machine learning models as APIs in the Free tier?

Yes, you can operationalize machine learning models to staging API services as part of the Free tier. To put the

staging API service into production and get a production endpoint for the operationalized service, you must use

the Standard tier.

What is the difference between Azure free trial and Azure Machine Learning Free tier?

The Microsoft Azure free trial offers credits that you can apply to any Azure service for one month. The Azure

Machine Learning Free tier offers continuous access specifically to Azure Machine Learning for non-production

workloads.

How do I move an experiment from the Free tier to the Standard tier?

To copy your experiments from the Free tier to the Standard tier:

1. Sign in to Azure Machine Learning Studio, and make sure that you can see both the Free workspace and the

Standard workspace in the workspace selector in the top navigation bar.

Studio workspaceStudio workspace

Guest AccessGuest Access

2. Switch to Free workspace if you are in the Standard workspace.

3. In the experiment list view, select an experiment that you'd like to copy, and then click the Copy command

button.

4. Select the Standard workspace from the dialog box that opens, and then click the Copy button. All the

associated datasets, trained model, etc. are copied together with the experiment into the Standard workspace.

5. You need to rerun the experiment and republish your web service in the Standard workspace.

Will I see different bills for different workspaces?

Workspace charges are broken out separately for each applicable meter on a single bill.

What specific kind of compute resources will my experiments be run on?

The Machine Learning service is a multitenant service. Actual compute resources that are used on the back end

vary and are optimized for performance and predictability.

What is Guest Access to Azure Machine Learning Studio?

Guest Access is a restricted trial experience. You can create and run experiments in Azure Machine Learning Studio

at no cost and without authentication. Guest sessions are non-persistent (cannot be saved) and limited to eight

hours. Other limitations include lack of support for R and Python, lack of staging APIs, and restricted dataset size

and storage capacity. By comparison, users who choose to sign in with a Microsoft account have full access to the

Free tier of Machine Learning Studio that's described previously, which includes a persistent workspace and more

comprehensive capabilities. To choose your free Machine Learning experience, click Get started on

https://studio.azureml.net, and then select Guess Access or sign in with a Microsoft account.

What's New in Azure Machine Learning

3/21/2018 • 1 min to read • Edit Online

The March 2017 release of Microsoft Azure Machine Learning updates provides the following feature:The March 2017 release of Microsoft Azure Machine Learning updates provides the following feature:

The August 2016 release of Microsoft Azure Machine Learning updates provide the following features:The August 2016 release of Microsoft Azure Machine Learning updates provide the following features:

The July 2016 release of Microsoft Azure Machine Learning updates provide the following features:The July 2016 release of Microsoft Azure Machine Learning updates provide the following features:

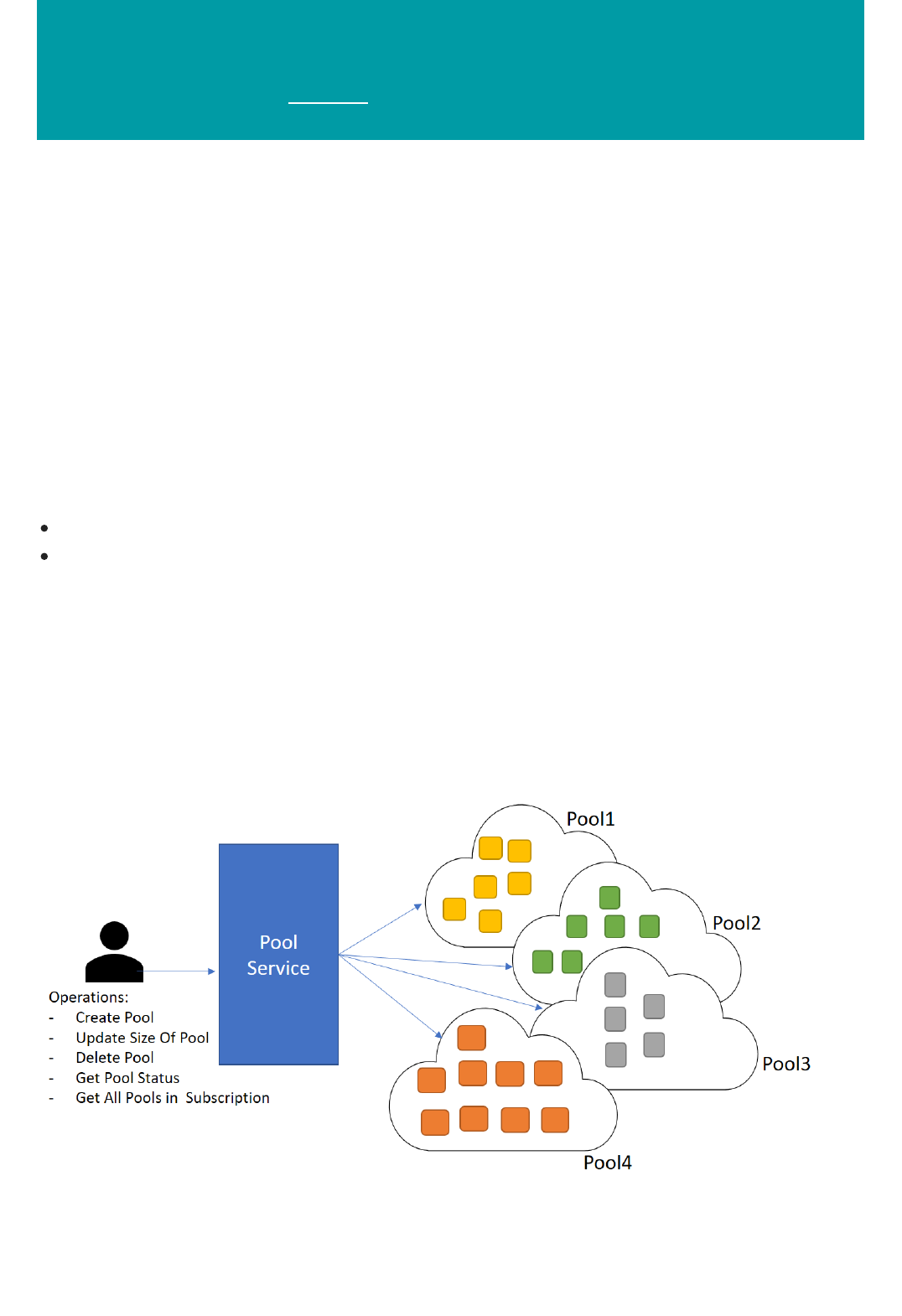

Dedicated Capacity for Azure Machine Learning BES Jobs

Machine Learning Batch Pool processing uses the Azure Batch service to provide customer-managed scale

for the Azure Machine Learning Batch Execution Service. Batch Pool processing allows you to create Azure

Batch pools on which you can submit batch jobs and have them execute in a predictable manner.

For more information, see Azure Batch service for Machine Learning jobs.

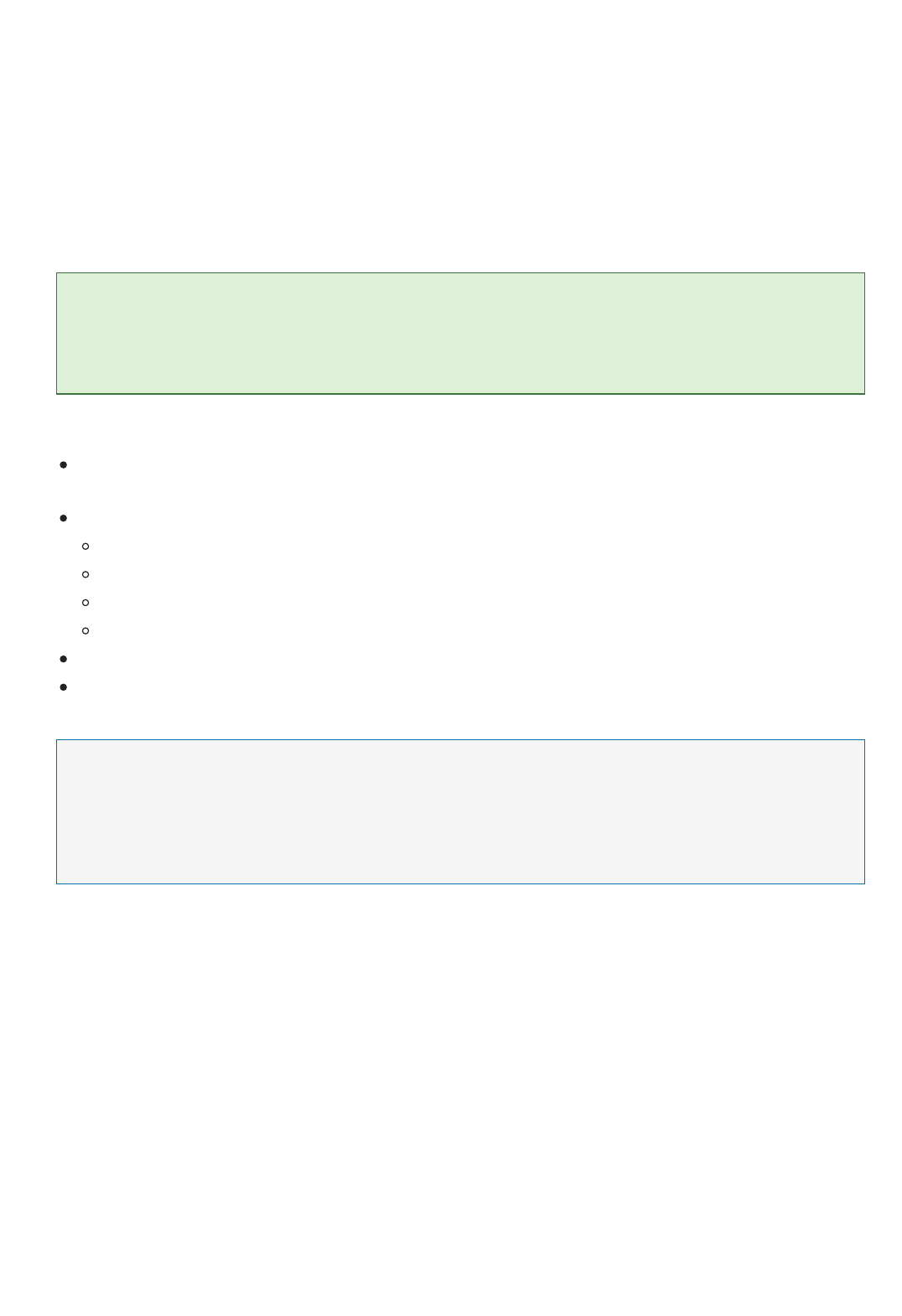

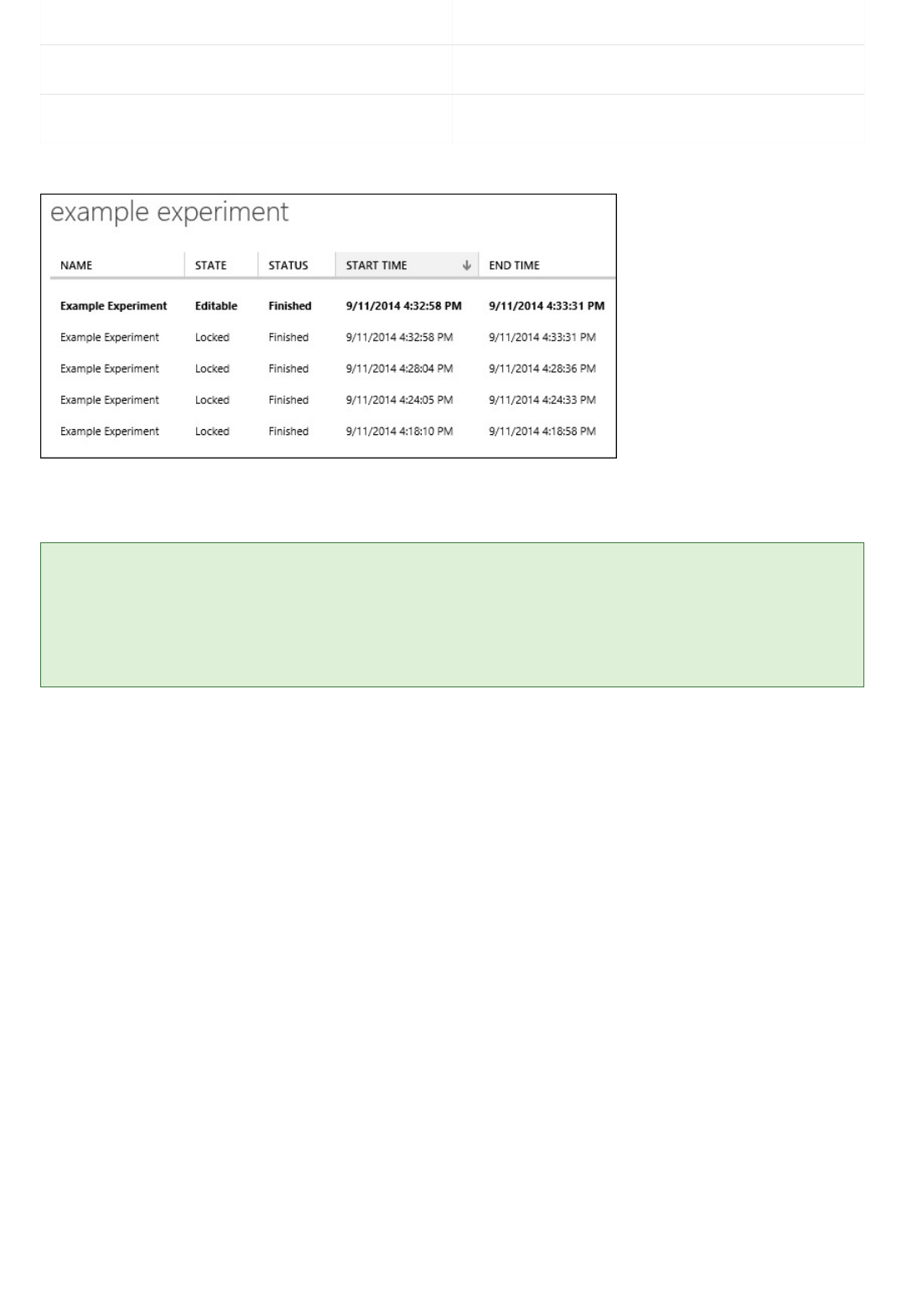

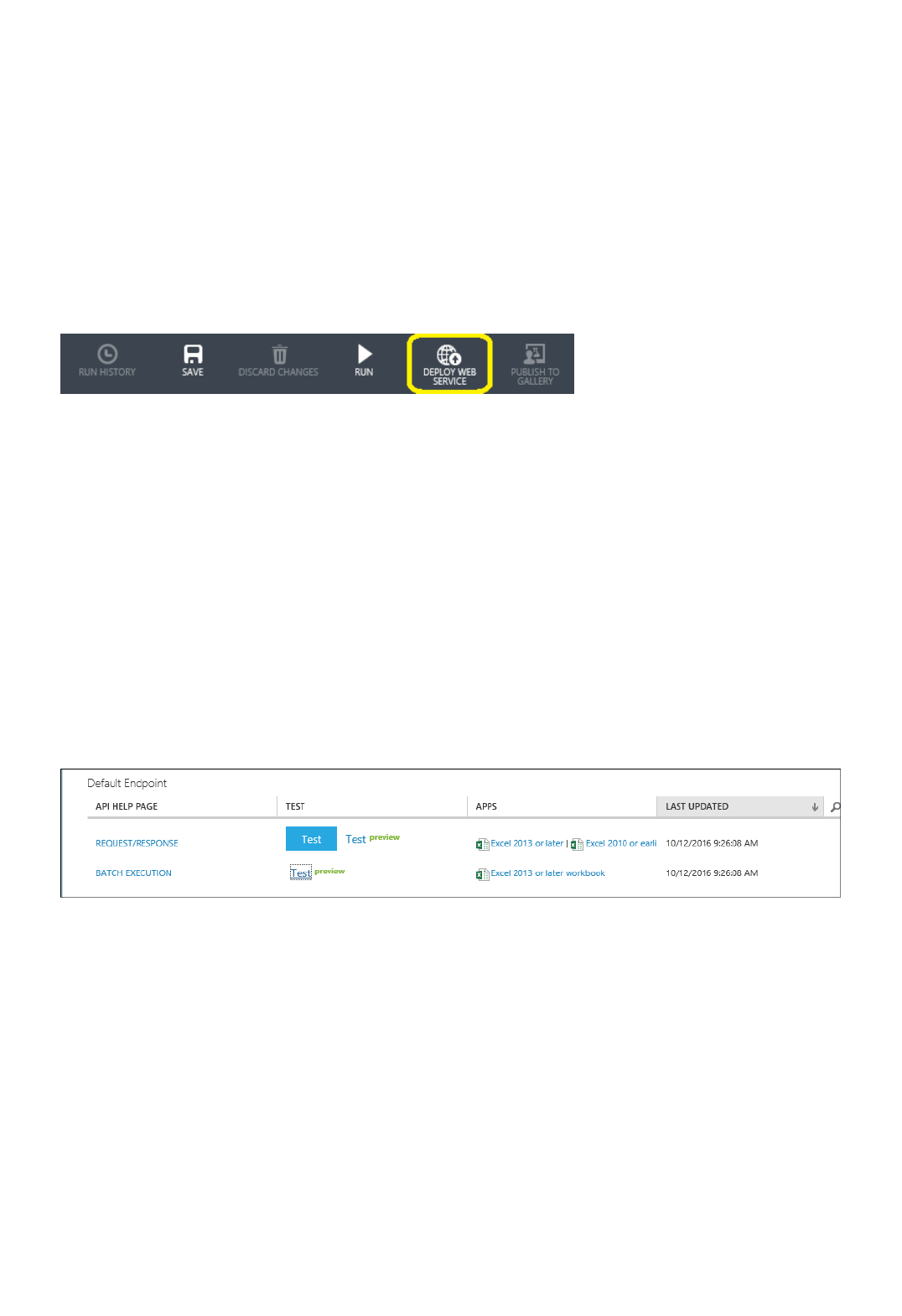

Classic Web services can now be managed in the new Microsoft Azure Machine Learning Web Services portal

that provides one place to manage all aspects of your Web service.

Which provides web service usage statistics.

Simplifies testing of Azure Machine Learning Remote-Request calls using sample data.

Provides a new Batch Execution Service test page with sample data and job submission history.

Provides easier endpoint management.

Web services are now managed as Azure resources managed through Azure Resource Manager interfaces,

allowing for the following enhancements:

Incorporates a new subscription-based, multi-region web service deployment model using Resource Manager

based APIs leveraging the Resource Manager Resource Provider for Web Services.

Introduces new pricing plans and plan management capabilities using the new Resource Manager RP for

Billing.

Provides web service usage statistics.

Simplifies testing of Azure Machine Learning Remote-Request calls using sample data.

Provides a new Batch Execution Service test page with sample data and job submission history.

There are new REST APIs to deploy and manage your Resource Manager based Web services.

There is a new Microsoft Azure Machine Learning Web Services portal that provides one place to

manage all aspects of your Web service.

You can now deploy your web service to multiple regions without needing to create a subscription in

each region.

In addition, the Machine Learning Studio has been updated to allow you to deploy to the new Web service model

or continue to deploy to the classic Web service model.

Machine learning tutorial: Create your first data

science experiment in Azure Machine Learning

Studio

4/9/2018 • 16 min to read • Edit Online

NOTENOTE

NOTENOTE

How does Machine Learning Studio help?

If you've never used Azure Machine Learning Studio before, this tutorial is for you.

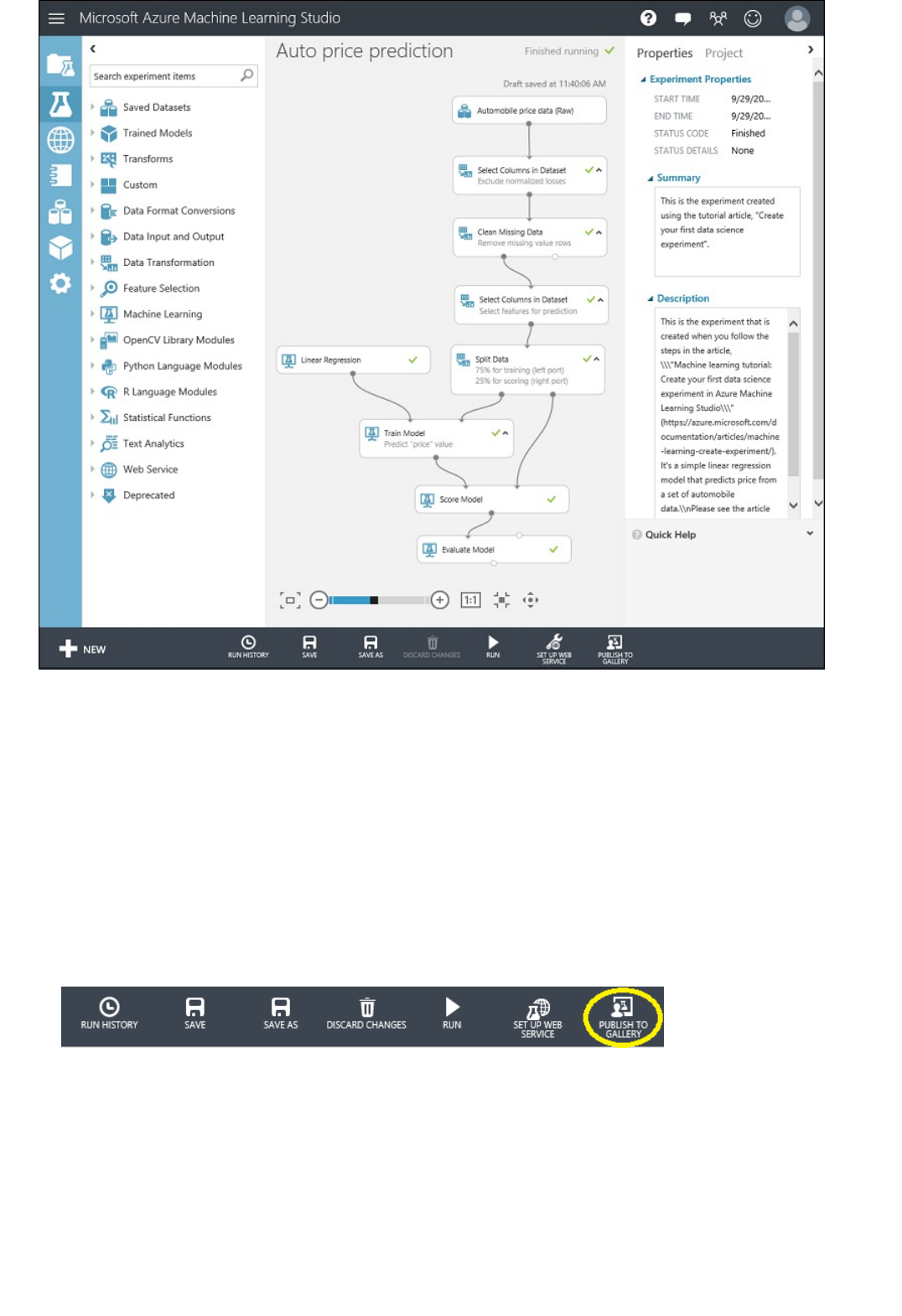

In this tutorial, we'll walk through how to use Studio for the first time to create a machine learning experiment.

The experiment will test an analytical model that predicts the price of an automobile based on different variables

such as make and technical specifications.

This tutorial shows you the basics of how to drag-and-drop modules onto your experiment, connect them together, run

the experiment, and look at the results. We're not going to discuss the general topic of machine learning or how to select

and use the 100+ built-in algorithms and data manipulation modules included in Studio.

If you're new to machine learning, the video series Data Science for Beginners might be a good place to start. This video

series is a great introduction to machine learning using everyday language and concepts.

If you're familiar with machine learning, but you're looking for more general information about Machine Learning Studio,

and the machine learning algorithms it contains, here are some good resources:

What is Machine Learning Studio? - This is a high-level overview of Studio.

Machine learning basics with algorithm examples - This infographic is useful if you want to learn more about the

different types of machine learning algorithms included with Machine Learning Studio.

Machine Learning Guide - This guide covers similar information as the infographic above, but in an interactive format.

Machine learning algorithm cheat sheet and How to choose algorithms for Microsoft Azure Machine Learning - This

downloadable poster and accompanying article discuss the Studio algorithms in depth.

Machine Learning Studio: Algorithm and Module Help - This is the complete reference for all Studio modules, including

machine learning algorithms,

You can try Azure Machine Learning for free. No credit card or Azure subscription is required. Get started now.

Machine Learning Studio makes it easy to set up an experiment using drag-and-drop modules preprogrammed

with predictive modeling techniques.

Using an interactive, visual workspace, you drag-and-drop datasets and analysis modules onto an interactive

canvas. You connect them together to form an experiment that you run in Machine Learning Studio. You create

a model, train the model, and score and test the model.

You can iterate on your model design, editing the experiment and running it until it gives you the results you're

looking for. When your model is ready, you can publish it as a web service so that others can send it new data

and get predictions in return.

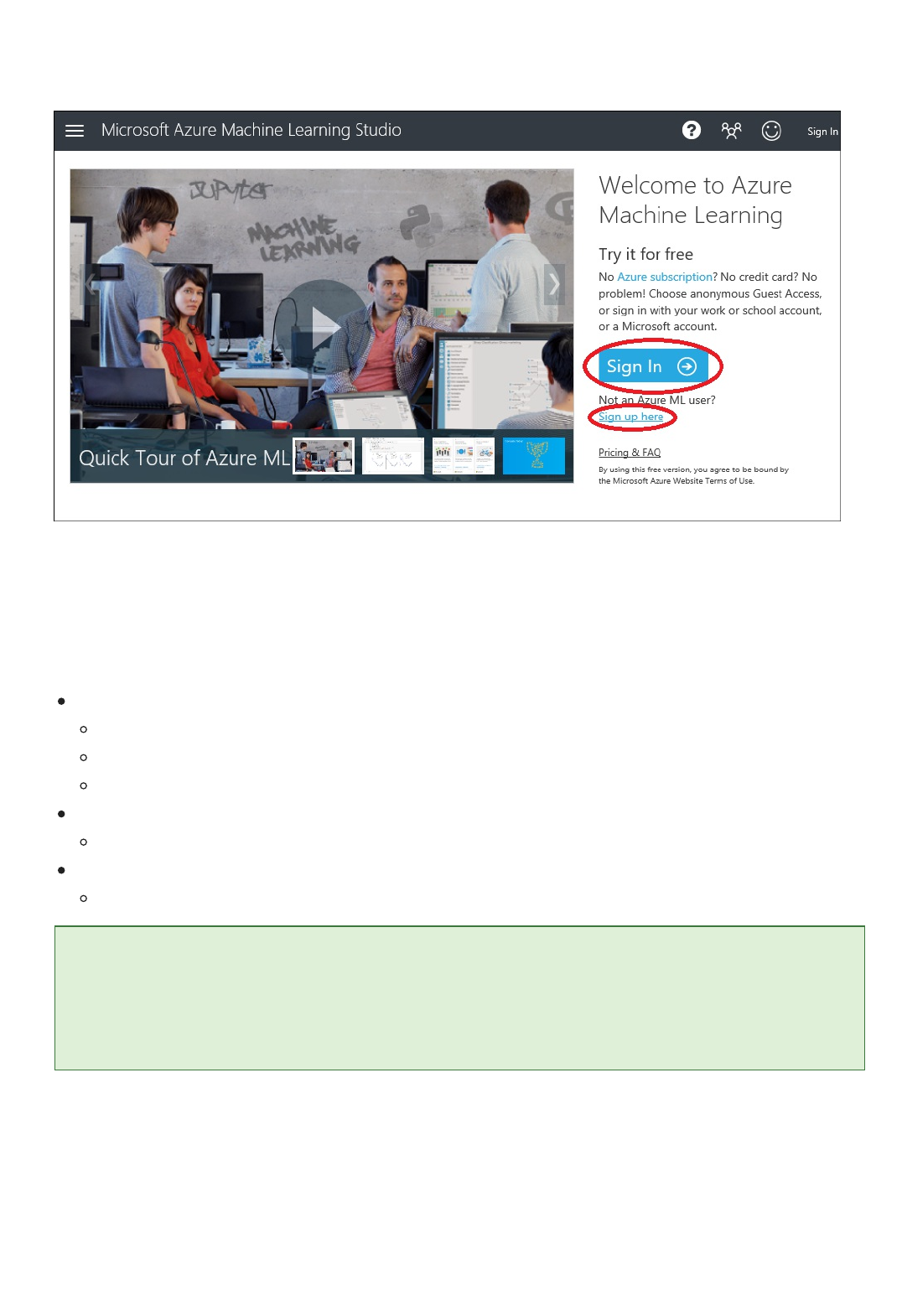

Open Machine Learning Studio

Five steps to create an experiment

TIPTIP

Step 1: Get data

To get started with Studio, go to https://studio.azureml.net. If you’ve signed into Machine Learning Studio before,

click Sign In. Otherwise, click Sign up here and choose between free and paid options.

Sign in to Machine Learning Studio

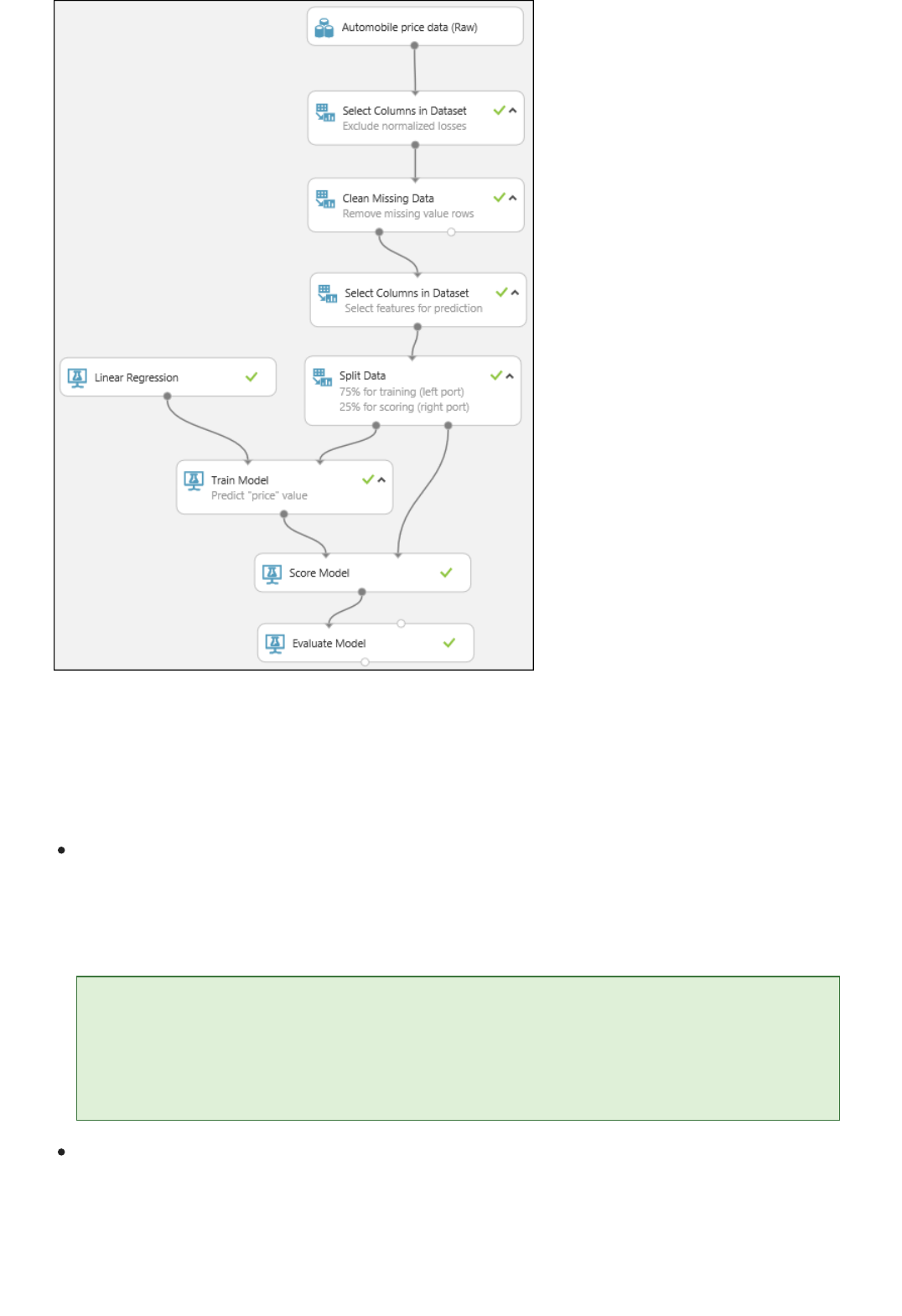

In this machine learning tutorial, you'll follow five basic steps to build an experiment in Machine Learning Studio

to create, train, and score your model:

Create a model

Train the model

Score and test the model

Step 1: Get data

Step 2: Prepare the data

Step 3: Define features

Step 4: Choose and apply a learning algorithm

Step 5: Predict new automobile prices

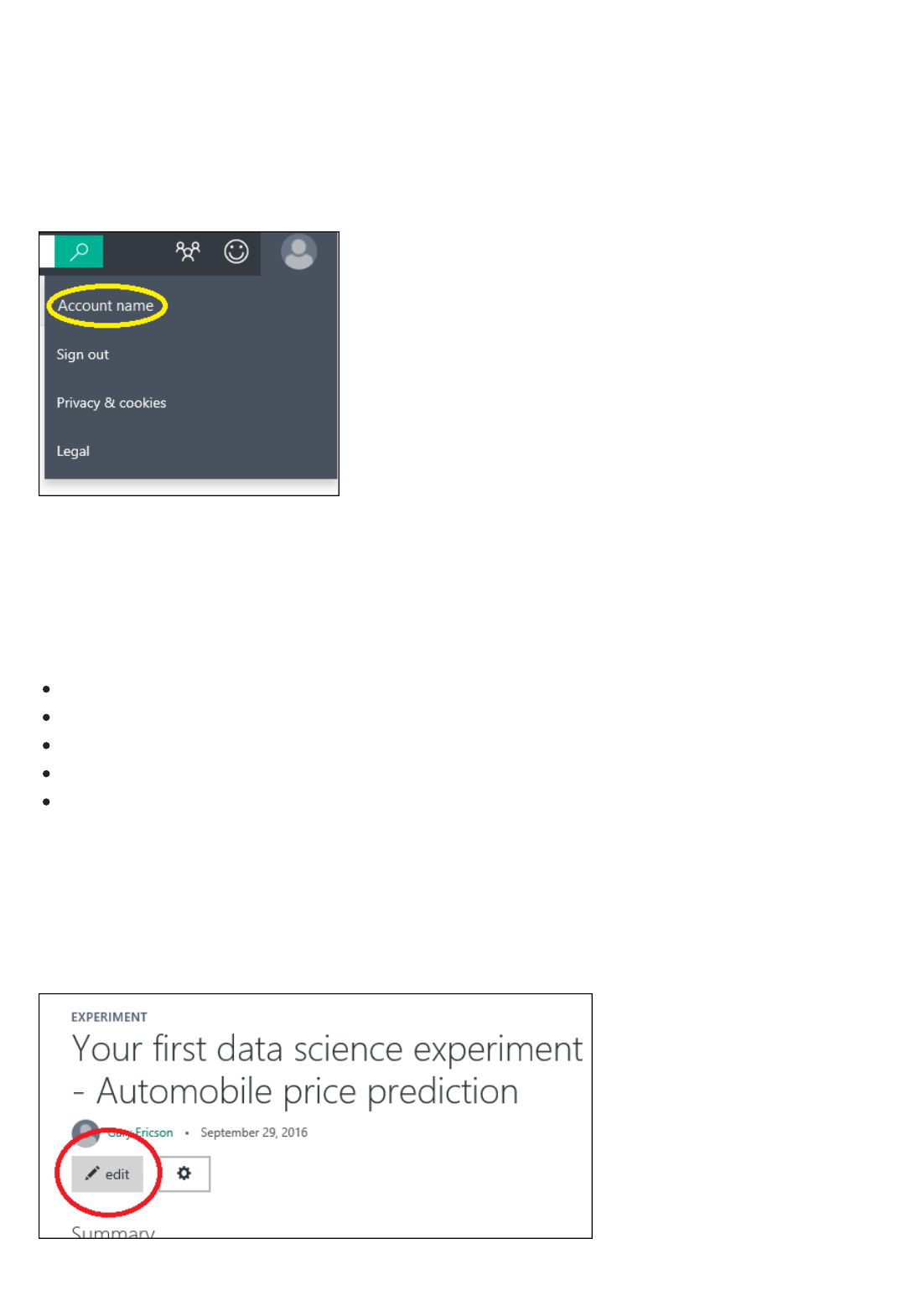

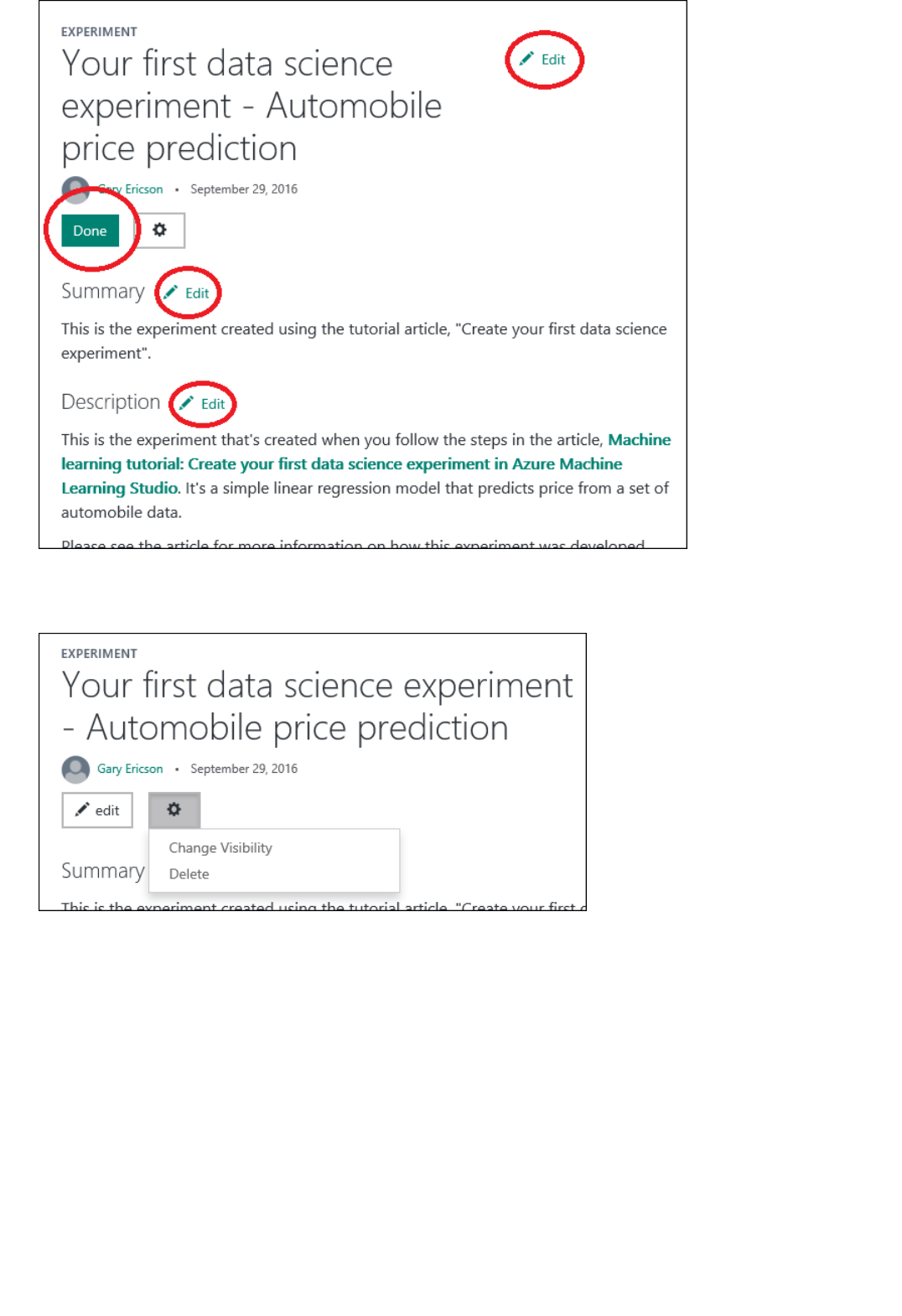

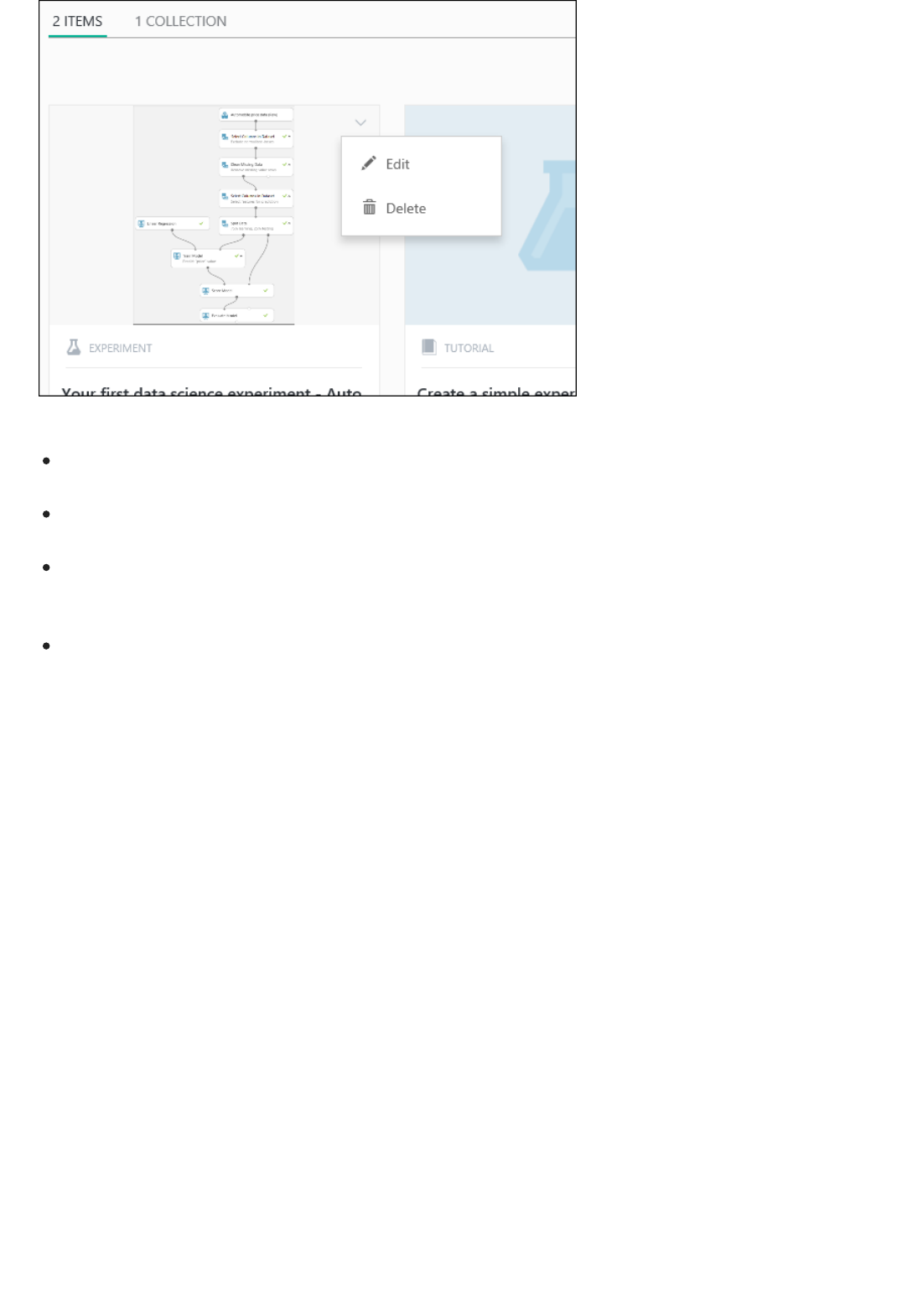

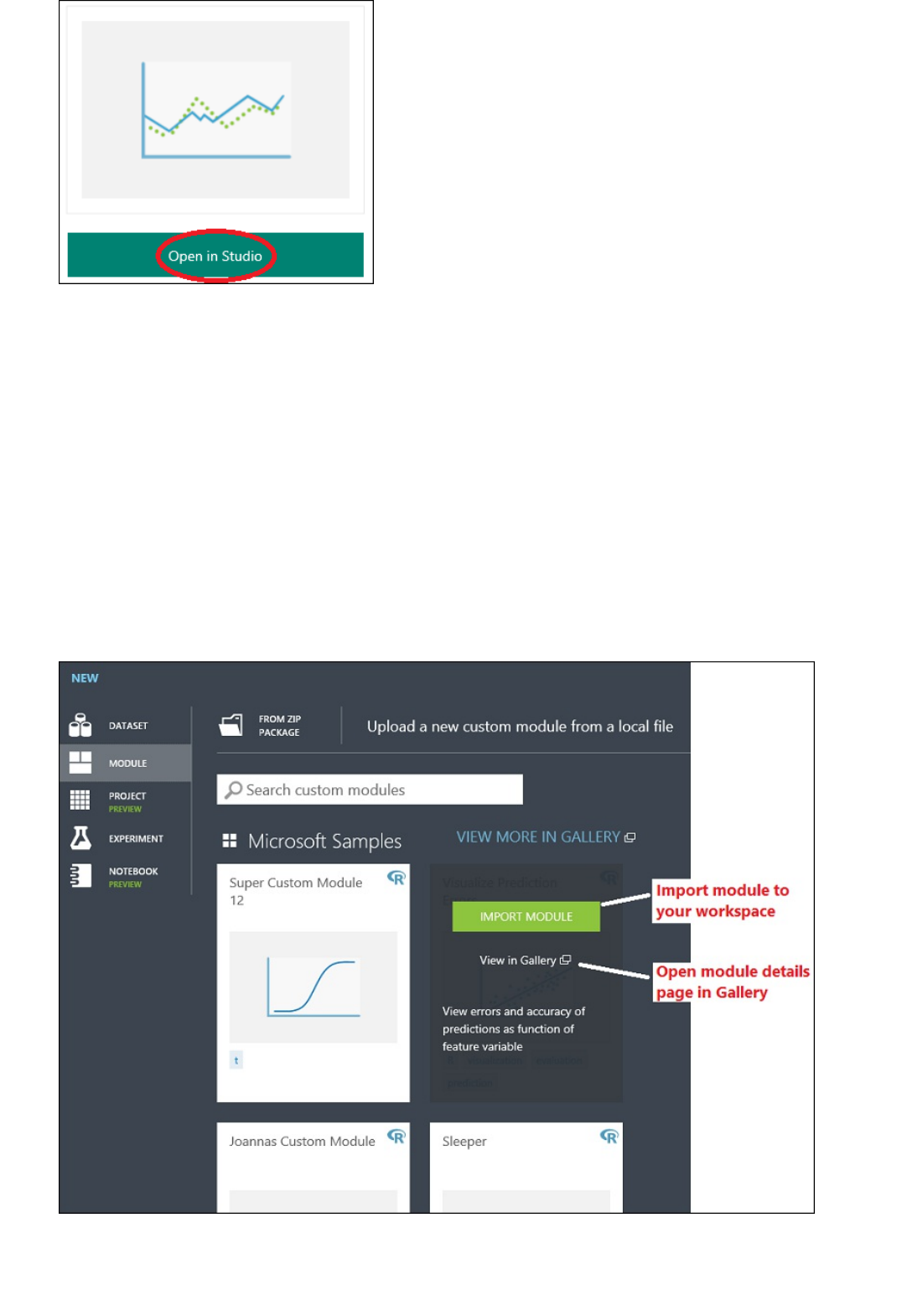

You can find a working copy of the following experiment in the Azure AI Gallery. Go to Your first data science

experiment - Automobile price prediction and click Open in Studio to download a copy of the experiment into your

Machine Learning Studio workspace.

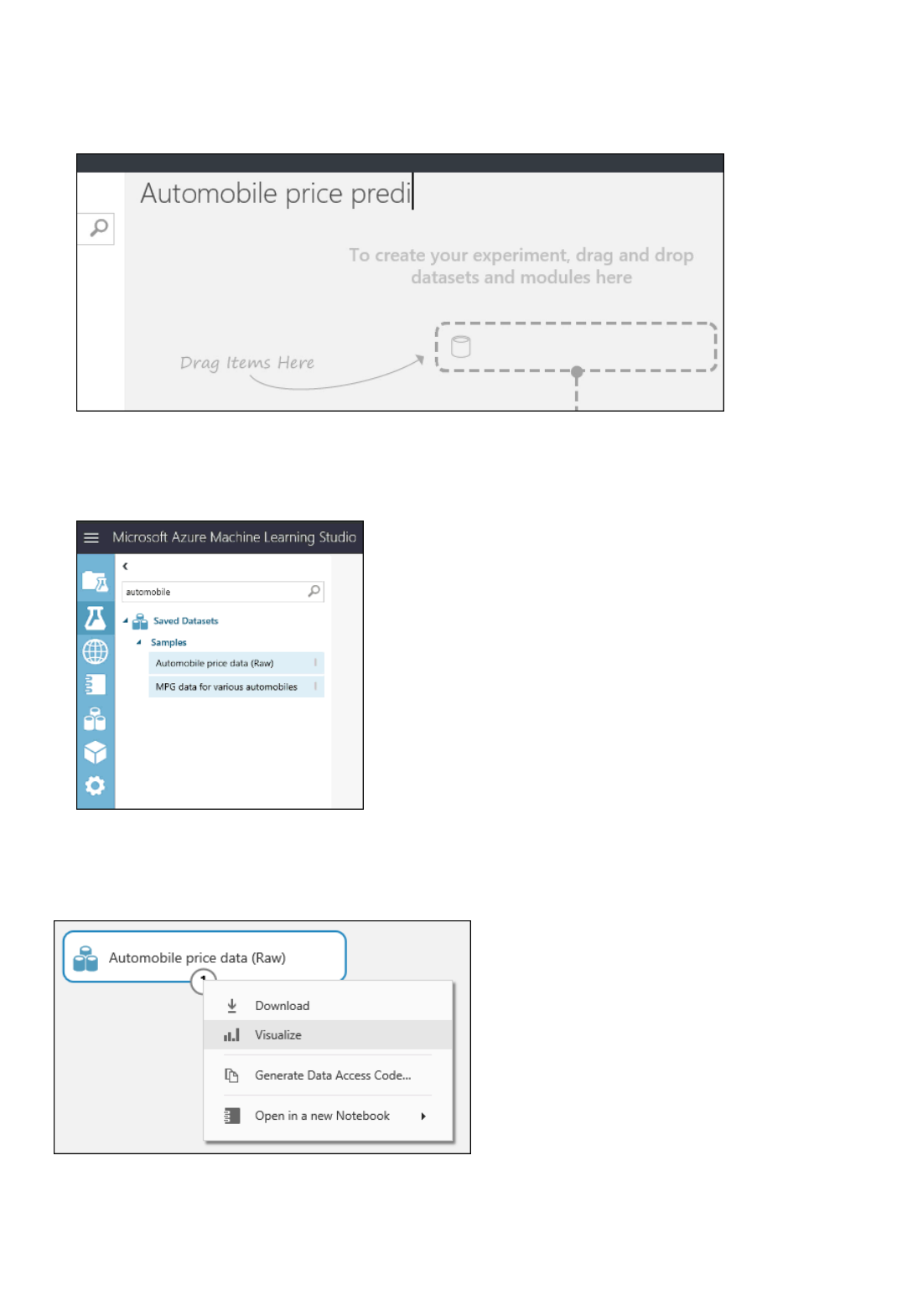

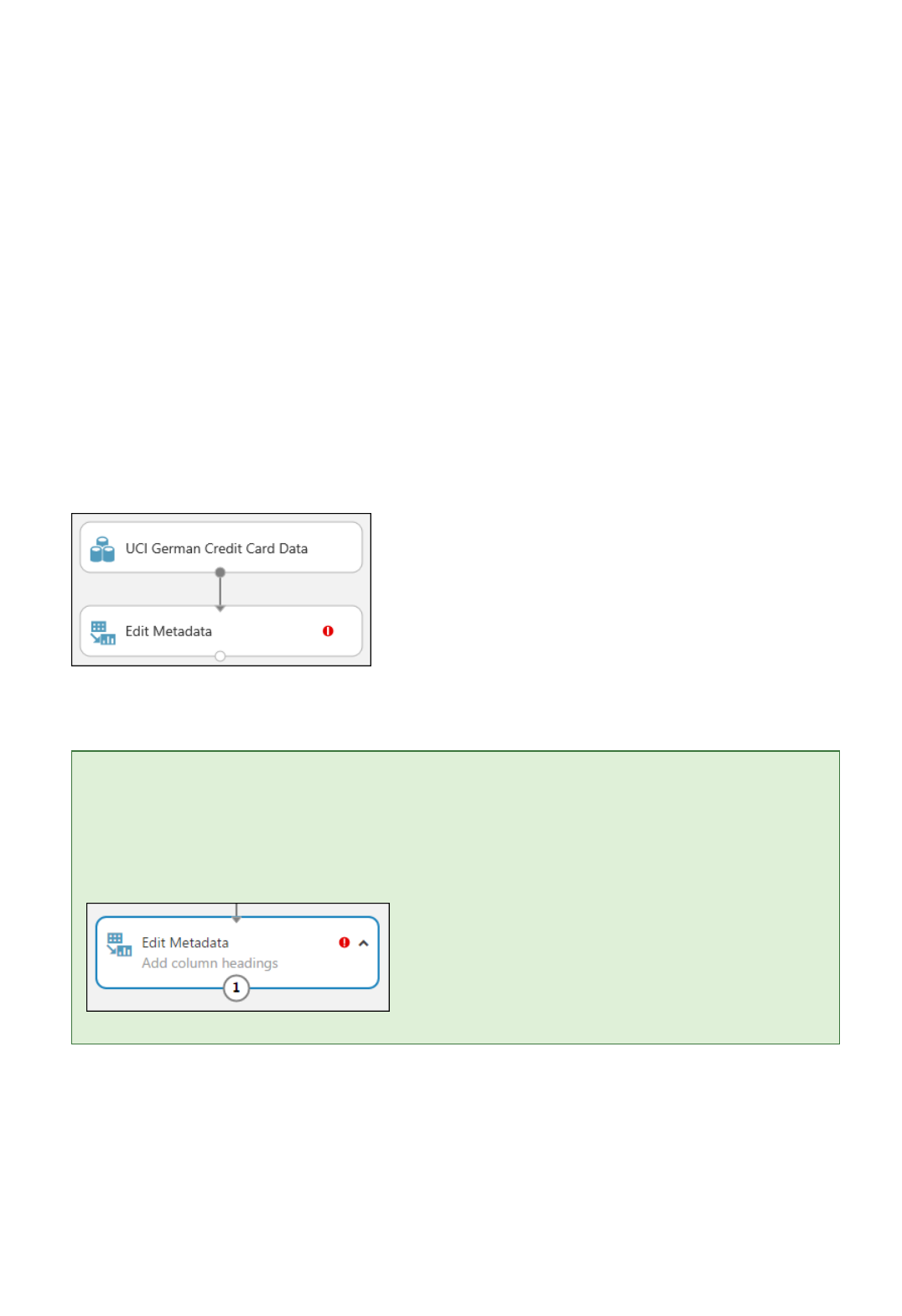

The first thing you need to perform machine learning is data. There are several sample datasets included with

Machine Learning Studio that you can use, or you can import data from many sources. For this example, we'll

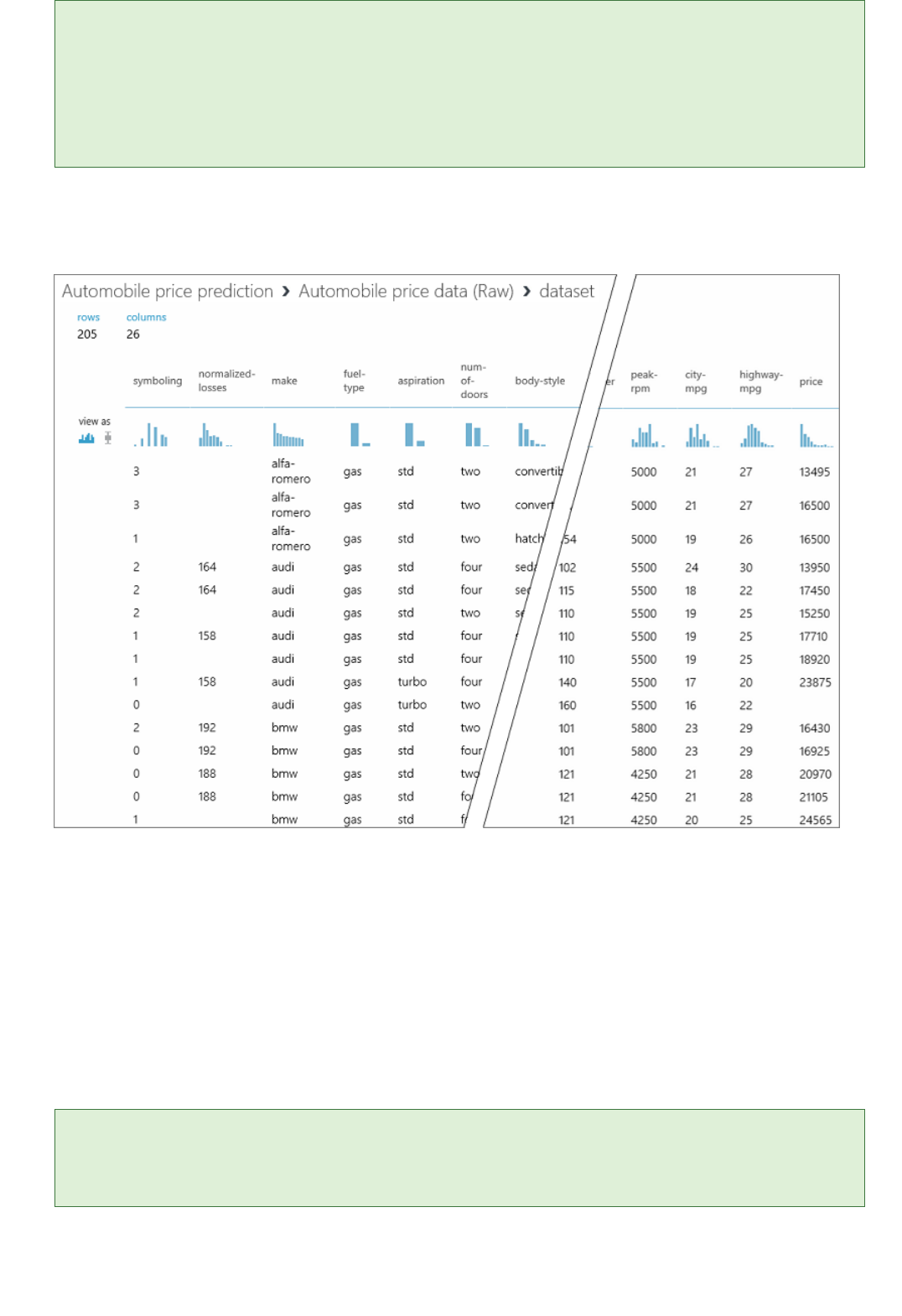

use the sample dataset, Automobile price data (Raw), that's included in your workspace. This dataset includes

entries for various individual automobiles, including information such as make, model, technical specifications,

and price.

Here's how to get the dataset into your experiment.

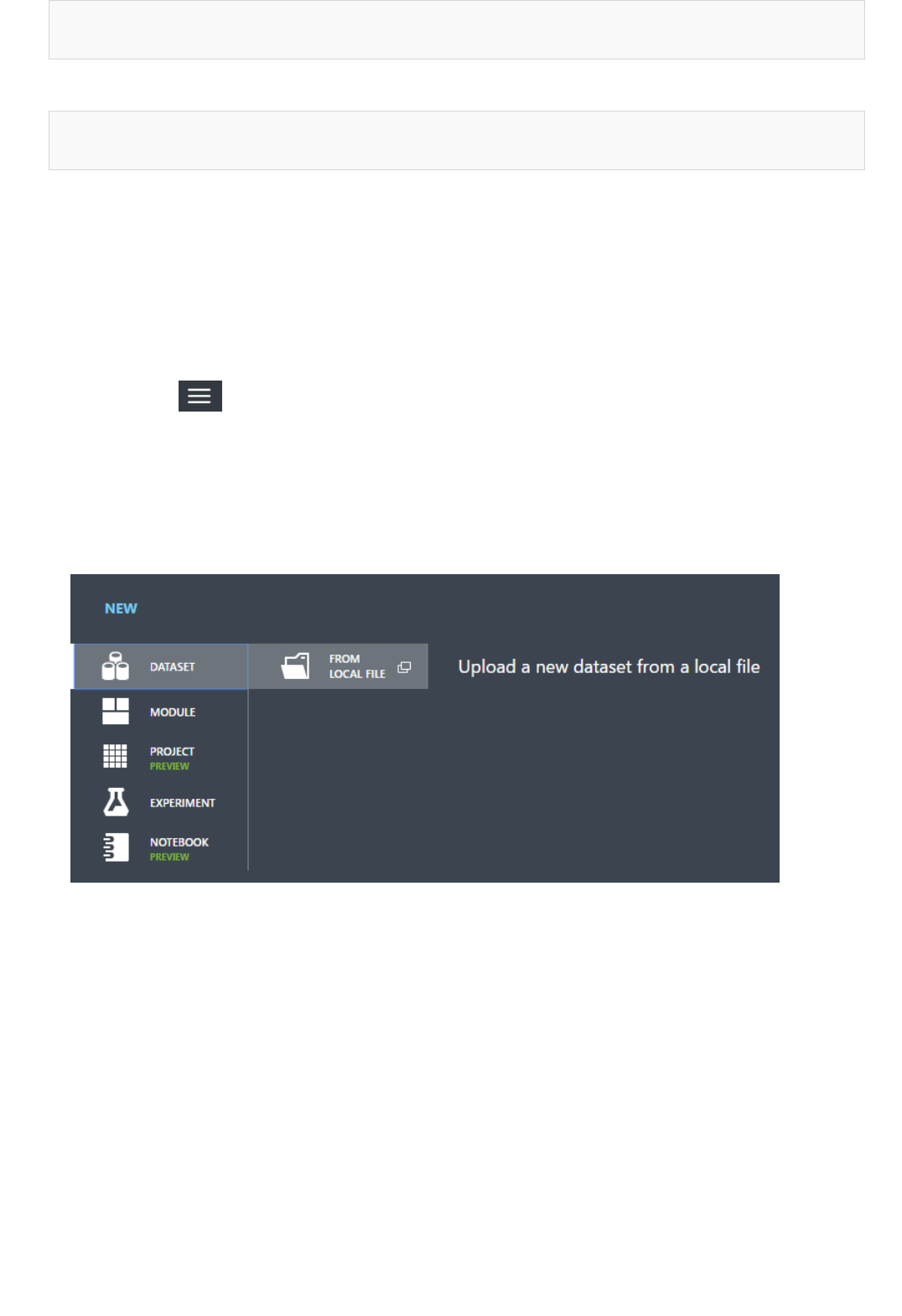

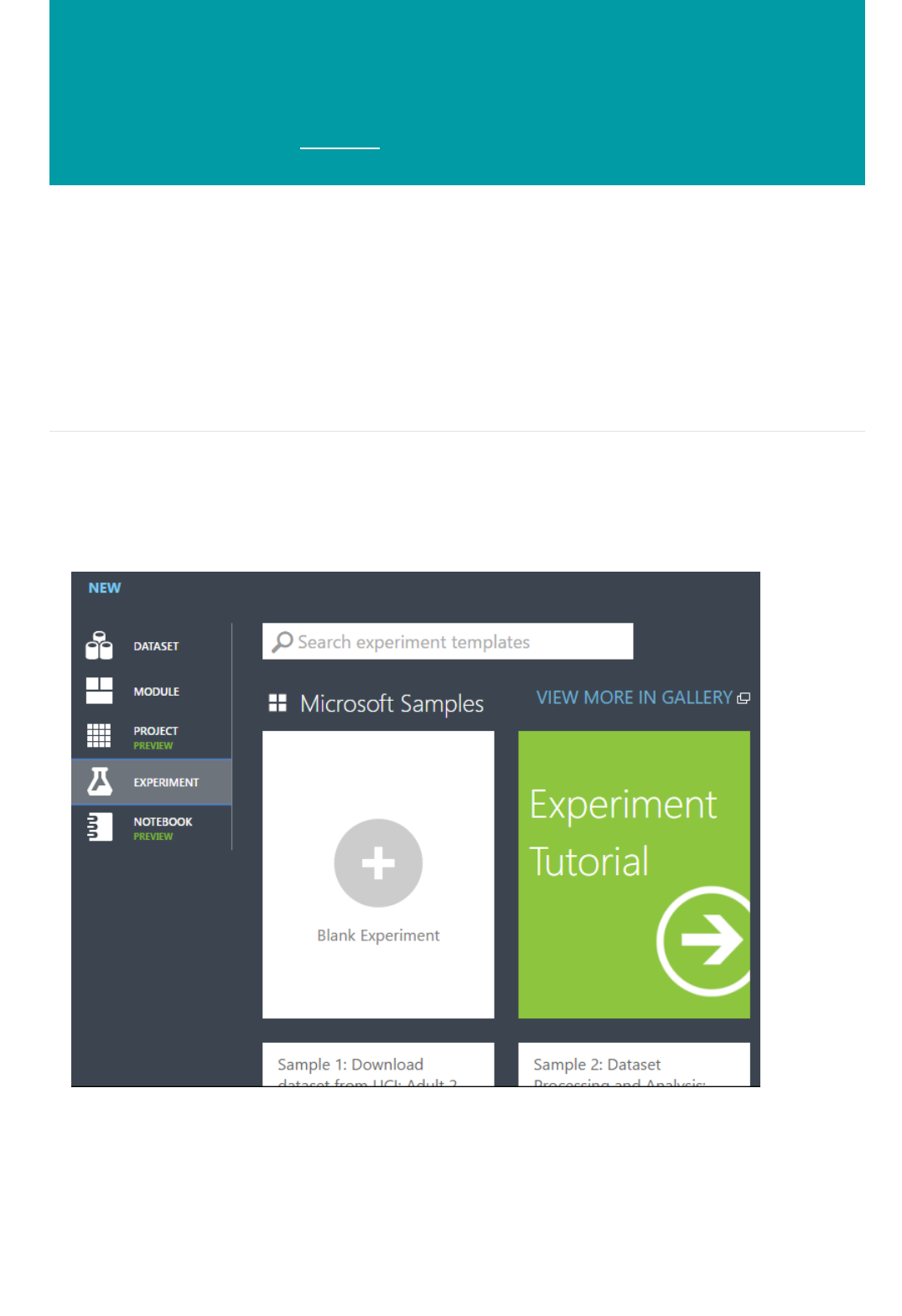

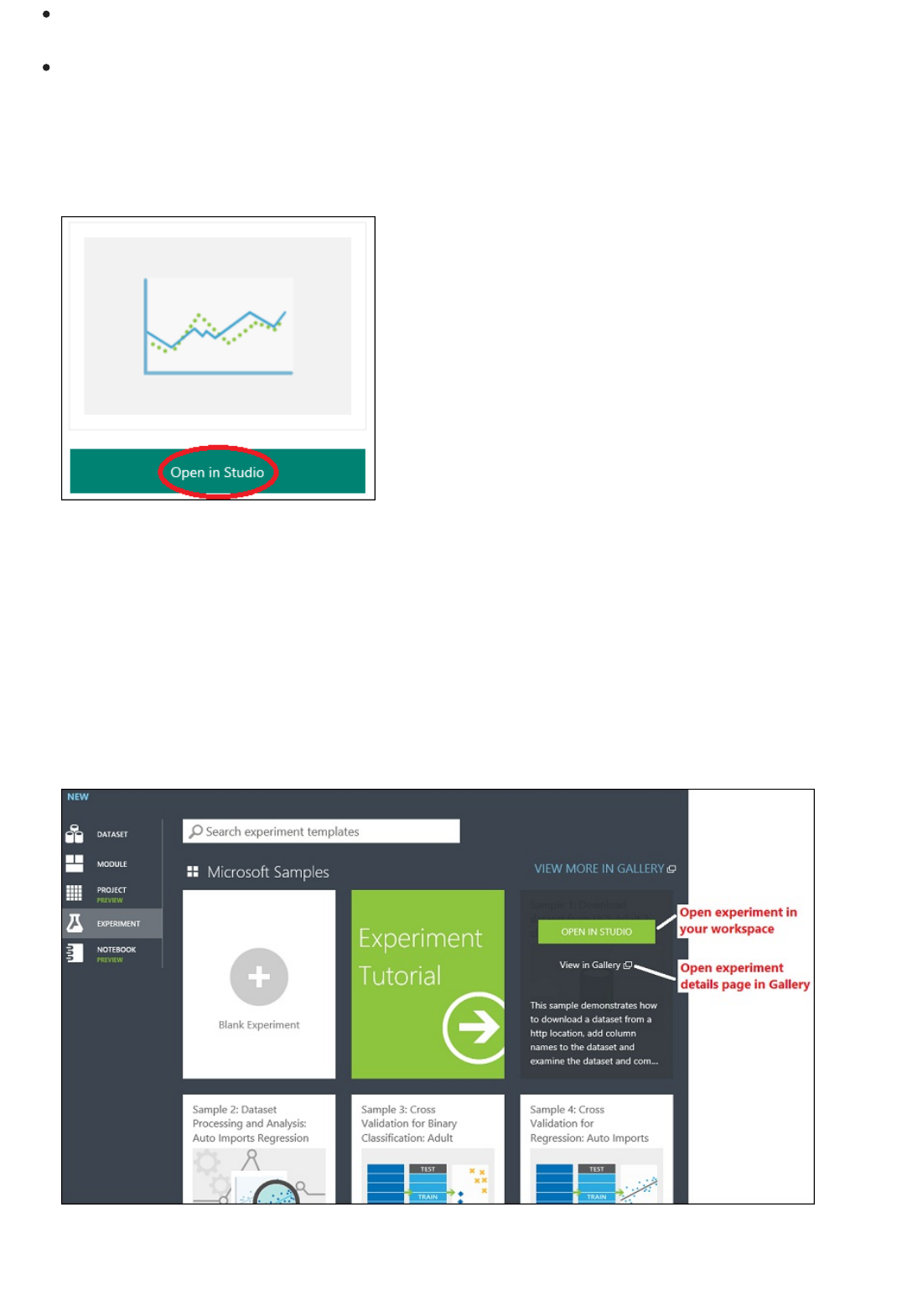

1. Create a new experiment by clicking +NEW at the bottom of the Machine Learning Studio window, select

EXPERIMENT, and then select Blank Experiment.

2. The experiment is given a default name that you can see at the top of the canvas. Select this text and

rename it to something meaningful, for example, Automobile price prediction. The name doesn't need

to be unique.

3. To the left of the experiment canvas is a palette of datasets and modules. Type automobile in the Search

box at the top of this palette to find the dataset labeled Automobile price data (Raw). Drag this dataset

to the experiment canvas.

Find the automobile dataset and drag it onto the experiment canvas

To see what this data looks like, click the output port at the bottom of the automobile dataset, and then select

Visualize.

Click the output port and select "Visualize"

TIPTIP

Step 2: Prepare the data

TIPTIP

Datasets and modules have input and output ports represented by small circles - input ports at the top, output ports at

the bottom. To create a flow of data through your experiment, you'll connect an output port of one module to an input

port of another. At any time, you can click the output port of a dataset or module to see what the data looks like at that

point in the data flow.

In this sample dataset, each instance of an automobile appears as a row, and the variables associated with each

automobile appear as columns. Given the variables for a specific automobile, we're going to try to predict the

price in far-right column (column 26, titled "price").

View the automobile data in the data visualization window

Close the visualization window by clicking the "x" in the upper-right corner.

A dataset usually requires some preprocessing before it can be analyzed. For example, you might have noticed

the missing values present in the columns of various rows. These missing values need to be cleaned so the

model can analyze the data correctly. In our case, we'll remove any rows that have missing values. Also, the

normalized-losses column has a large proportion of missing values, so we'll exclude that column from the

model altogether.

Cleaning the missing values from input data is a prerequisite for using most of the modules.

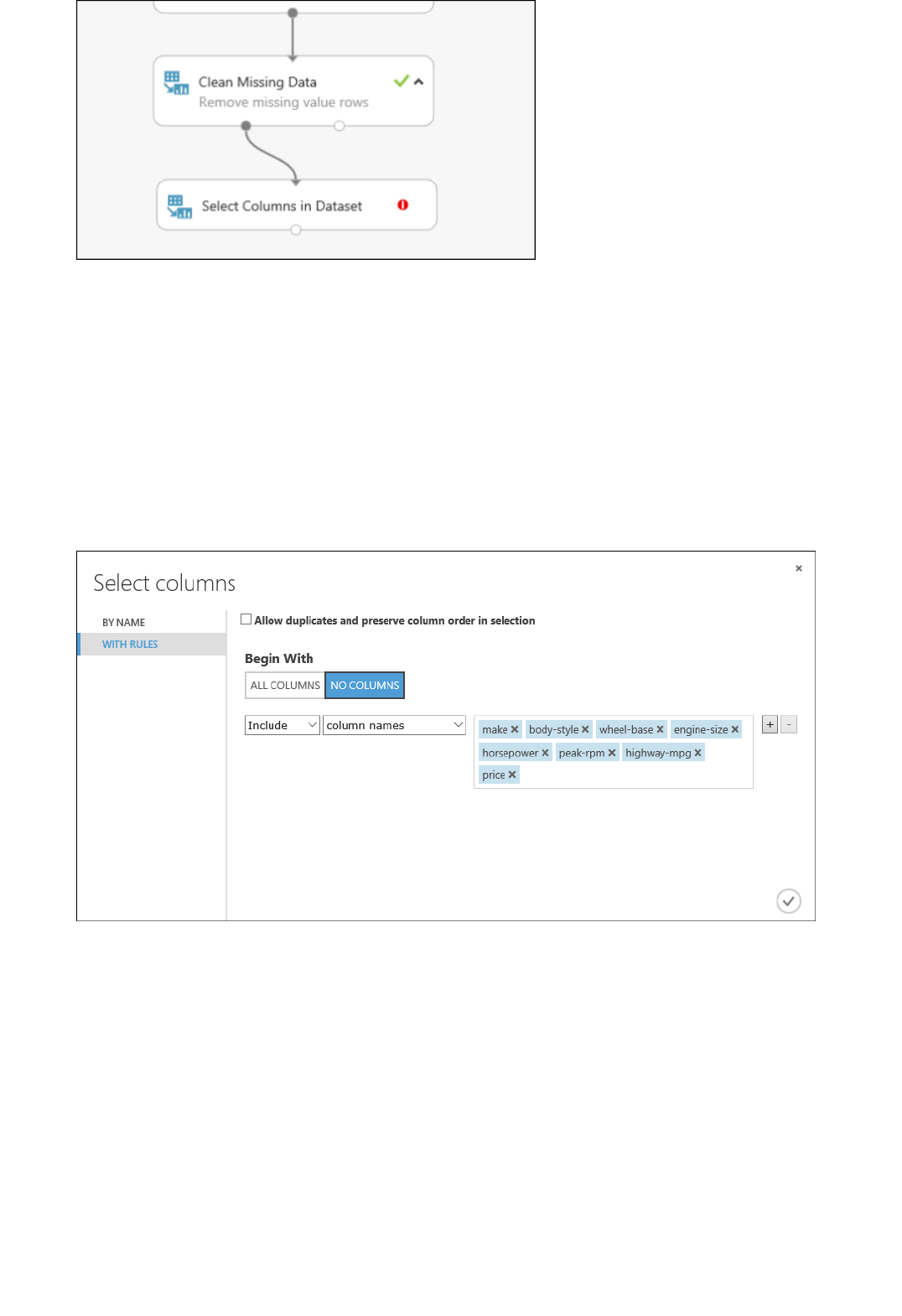

First we add a module that removes the normalized-losses column completely, and then we add another

module that removes any row that has missing data.

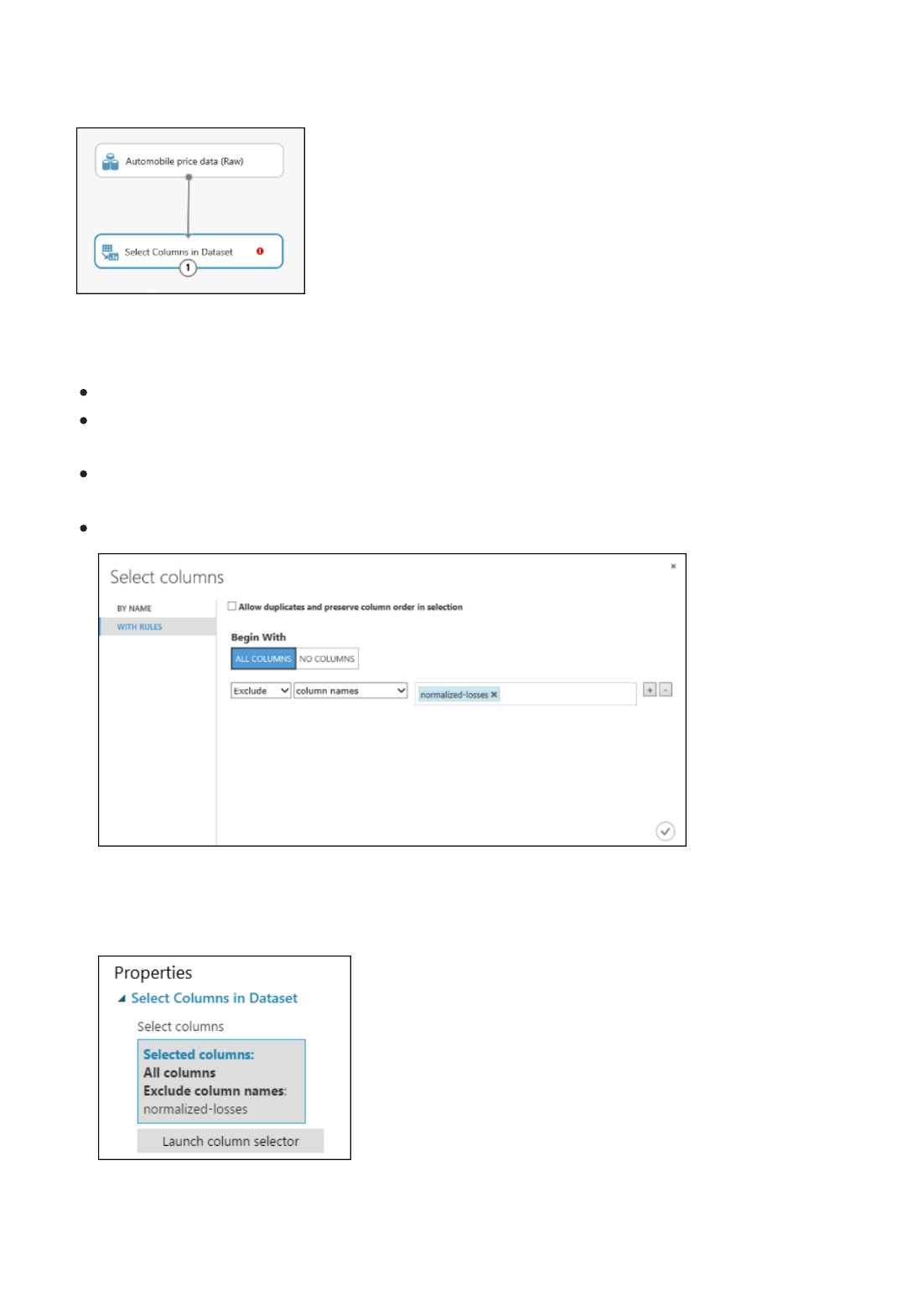

1. Type select columns in the Search box at the top of the module palette to find the Select Columns in

Dataset module, then drag it to the experiment canvas. This module allows us to select which columns of

data we want to include or exclude in the model.

2. Connect the output port of the Automobile price data (Raw) dataset to the input port of the Select

Columns in Dataset module.

Add the "Select Columns in Dataset" module to the experiment canvas and connect it

3. Click the Select Columns in Dataset module and click Launch column selector in the Properties pane.

On the left, click With rules

Under Begin With, click All columns. This directs Select Columns in Dataset to pass through all the

columns (except those columns we're about to exclude).

From the drop-downs, select Exclude and column names, and then click inside the text box. A list of

columns is displayed. Select normalized-losses, and it's added to the text box.

Click the check mark (OK) button to close the column selector (on the lower-right).

Launch the column selector and exclude the "normalized-losses" column

Now the properties pane for Select Columns in Dataset indicates that it will pass through all

columns from the dataset except normalized-losses.

The properties pane shows that the "normalized-losses" column is excluded

TIPTIP

You can add a comment to a module by double-clicking the module and entering text. This can help you

see at a glance what the module is doing in your experiment. In this case double-click the Select Columns in

Dataset module and type the comment "Exclude normalized losses."

Double-click a module to add a comment

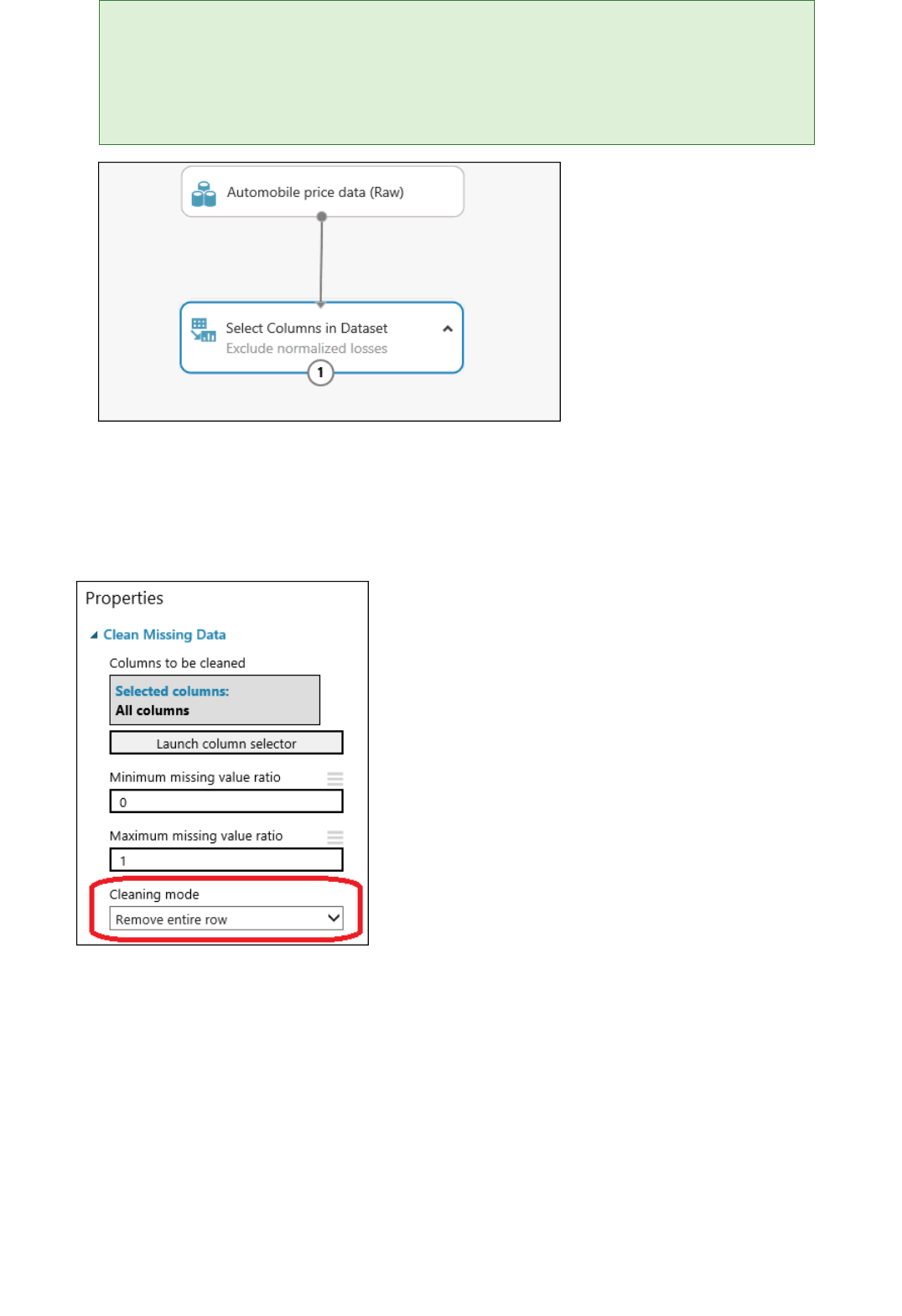

4. Drag the Clean Missing Data module to the experiment canvas and connect it to the Select Columns in

Dataset module. In the Properties pane, select Remove entire row under Cleaning mode. This directs

Clean Missing Data to clean the data by removing rows that have any missing values. Double-click the

module and type the comment "Remove missing value rows."

Set the cleaning mode to "Remove entire row" for the "Clean Missing Data" module

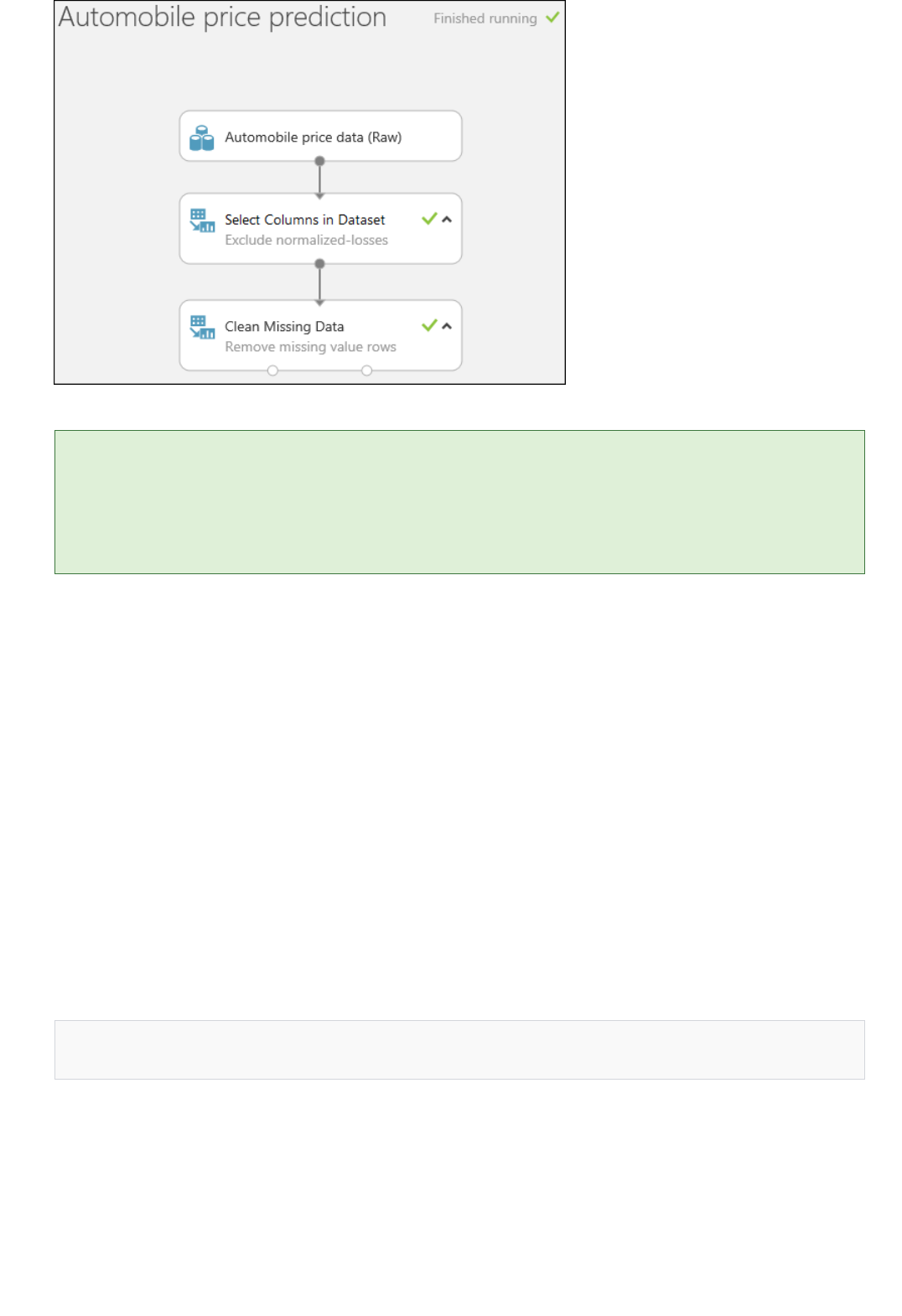

5. Run the experiment by clicking RUN at the bottom of the page.

When the experiment has finished running, all the modules have a green check mark to indicate that they

finished successfully. Notice also the Finished running status in the upper-right corner.

TIPTIP

Step 3: Define features

make, body-style, wheel-base, engine-size, horsepower, peak-rpm, highway-mpg, price

After running it, the experiment should look something like this

Why did we run the experiment now? By running the experiment, the column definitions for our data pass from the

dataset, through the Select Columns in Dataset module, and through the Clean Missing Data module. This means that any

modules we connect to Clean Missing Data will also have this same information.

All we have done in the experiment up to this point is clean the data. If you want to view the cleaned dataset, click

the left output port of the Clean Missing Data module and select Visualize. Notice that the normalized-losses

column is no longer included, and there are no missing values.

Now that the data is clean, we're ready to specify what features we're going to use in the predictive model.

In machine learning, features are individual measurable properties of something you’re interested in. In our

dataset, each row represents one automobile, and each column is a feature of that automobile.

Finding a good set of features for creating a predictive model requires experimentation and knowledge about the

problem you want to solve. Some features are better for predicting the target than others. Also, some features

have a strong correlation with other features and can be removed. For example, city-mpg and highway-mpg are

closely related so we can keep one and remove the other without significantly affecting the prediction.

Let's build a model that uses a subset of the features in our dataset. You can come back later and select different

features, run the experiment again, and see if you get better results. But to start, let's try the following features:

1. Drag another Select Columns in Dataset module to the experiment canvas. Connect the left output port of

the Clean Missing Data module to the input of the Select Columns in Dataset module.

Step 4: Choose and apply a learning algorithm

Connect the "Select Columns in Dataset" module to the "Clean Missing Data" module

2. Double-click the module and type "Select features for prediction."

3. Click Launch column selector in the Properties pane.

4. Click With rules.

5. Under Begin With, click No columns. In the filter row, select Include and column names and select

our list of column names in the text box. This directs the module to not pass through any columns

(features) except the ones that we specify.

6. Click the check mark (OK) button.

Select the columns (features) to include in the prediction

This produces a filtered dataset containing only the features we want to pass to the learning algorithm we'll use

in the next step. Later, you can return and try again with a different selection of features.

Now that the data is ready, constructing a predictive model consists of training and testing. We'll use our data to

train the model, and then we'll test the model to see how closely it's able to predict prices.

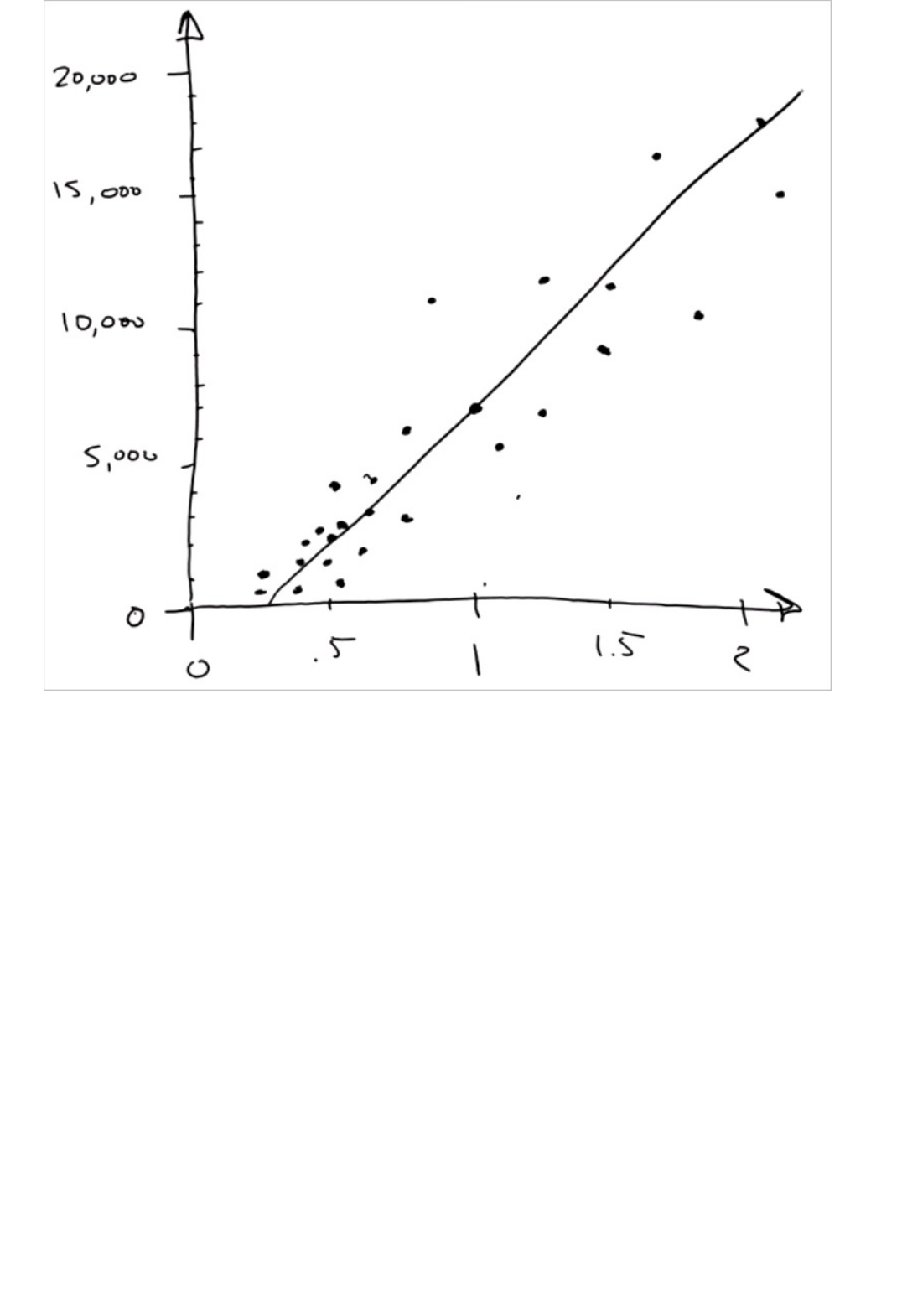

Classification and regression are two types of supervised machine learning algorithms. Classification predicts an

answer from a defined set of categories, such as a color (red, blue, or green). Regression is used to predict a

number.

Because we want to predict price, which is a number, we'll use a regression algorithm. For this example, we'll use

a simple linear regression model.

TIPTIP

If you want to learn more about different types of machine learning algorithms and when to use them, you might view the

first video in the Data Science for Beginners series, The five questions data science answers. You might also look at the

infographic Machine learning basics with algorithm examples, or check out the Machine learning algorithm cheat sheet.

We train the model by giving it a set of data that includes the price. The model scans the data and look for

correlations between an automobile's features and its price. Then we'll test the model - we'll give it a set of

features for automobiles we're familiar with and see how close the model comes to predicting the known price.

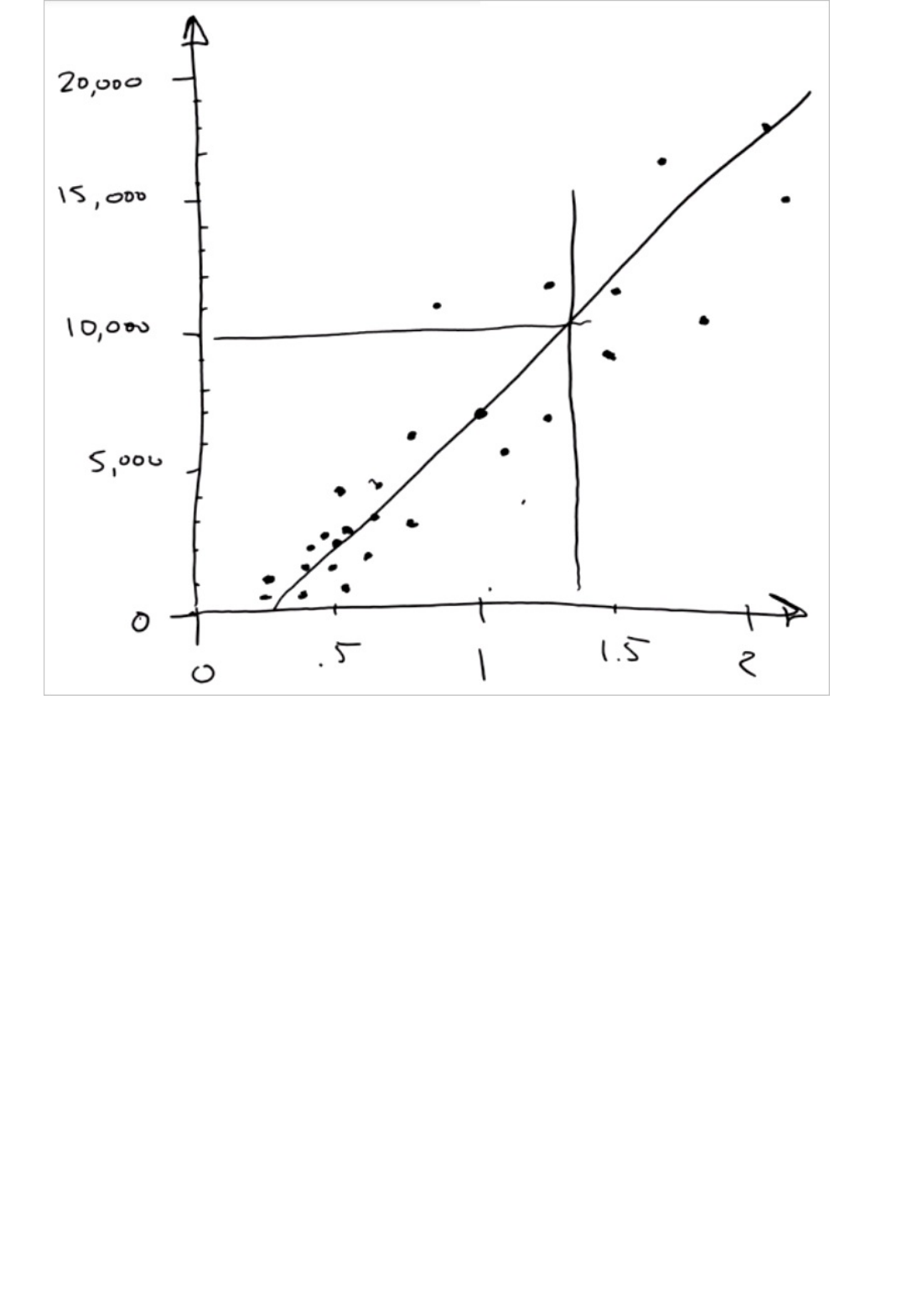

We'll use our data for both training the model and testing it by splitting the data into separate training and

testing datasets.

TIPTIP

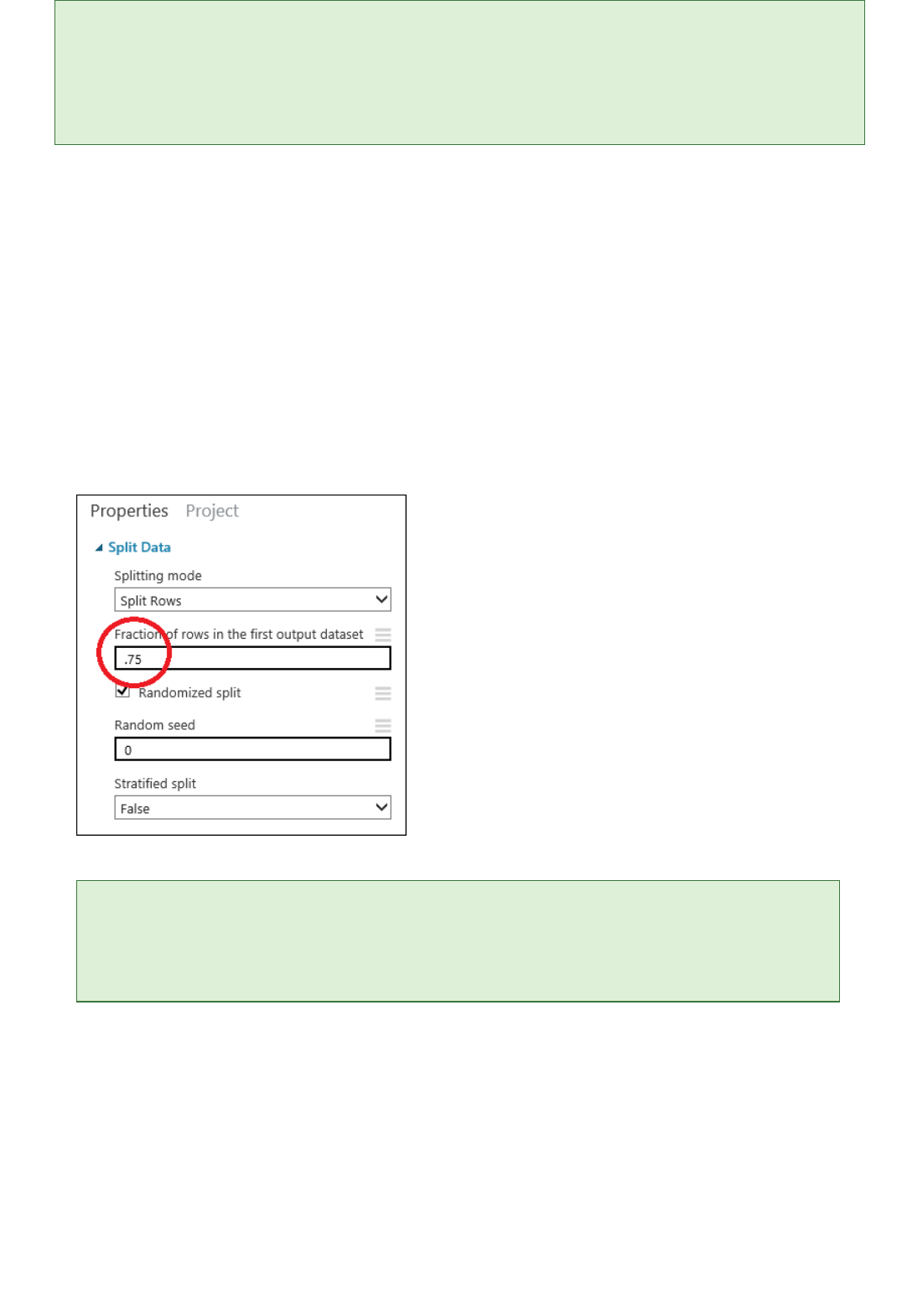

1. Select and drag the Split Data module to the experiment canvas and connect it to the last Select Columns

in Dataset module.

2. Click the Split Data module to select it. Find the Fraction of rows in the first output dataset (in the

Properties pane to the right of the canvas) and set it to 0.75. This way, we'll use 75 percent of the data to

train the model, and hold back 25 percent for testing (later, you can experiment with using different

percentages).

Set the split fraction of the "Split Data" module to 0.75

By changing the Random seed parameter, you can produce different random samples for training and testing.

This parameter controls the seeding of the pseudo-random number generator.

3. Run the experiment. When the experiment is run, the Select Columns in Dataset and Split Data modules

pass column definitions to the modules we'll be adding next.

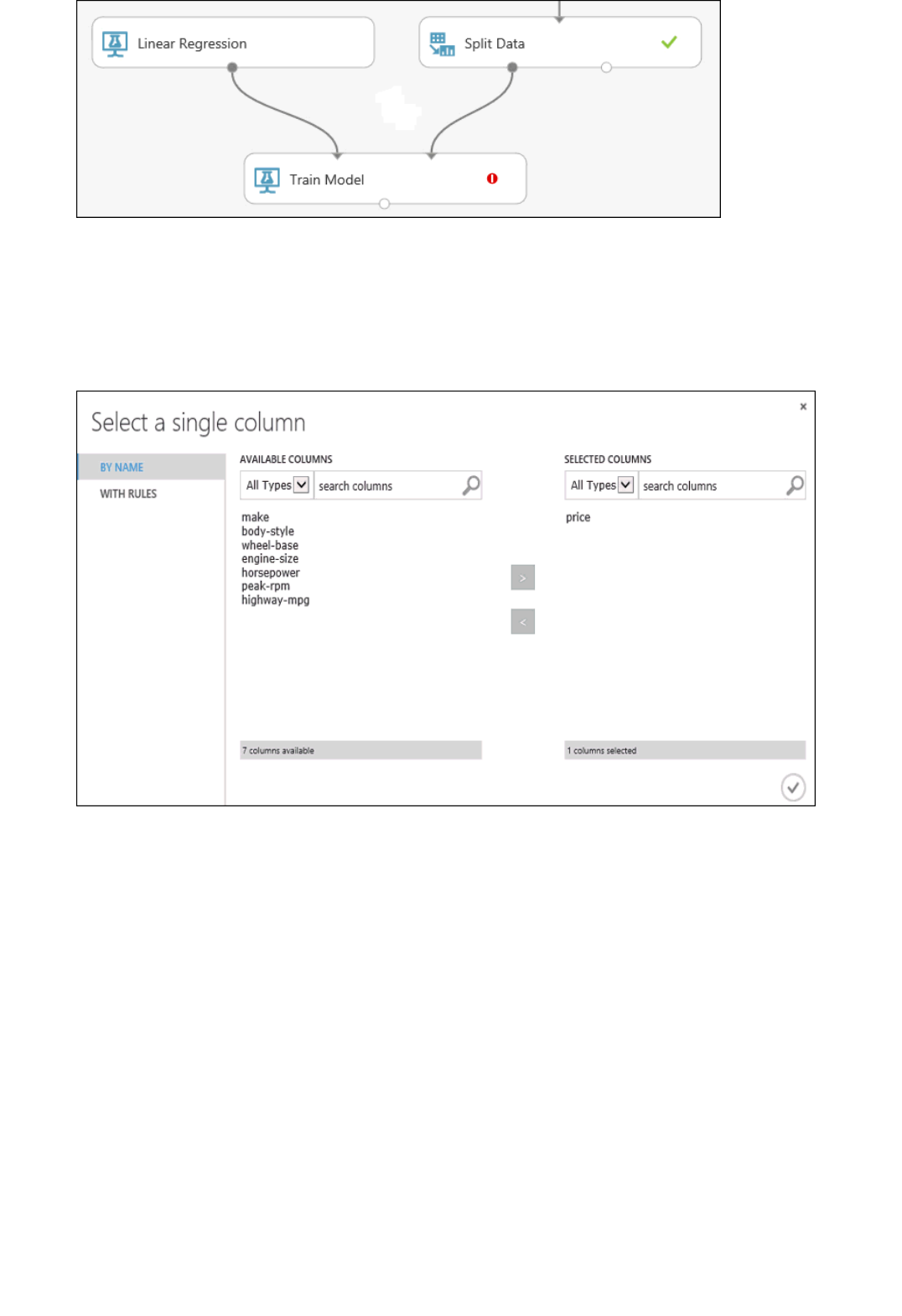

4. To select the learning algorithm, expand the Machine Learning category in the module palette to the left

of the canvas, and then expand Initialize Model. This displays several categories of modules that can be

used to initialize machine learning algorithms. For this experiment, select the Linear Regression module

under the Regression category, and drag it to the experiment canvas. (You can also find the module by

typing "linear regression" in the palette Search box.)

5. Find and drag the Train Model module to the experiment canvas. Connect the output of the Linear

Regression module to the left input of the Train Model module, and connect the training data output (left

port) of the Split Data module to the right input of the Train Model module.

Connect the "Train Model" module to both the "Linear Regression" and "Split Data" modules

6. Click the Train Model module, click Launch column selector in the Properties pane, and then select the

price column. This is the value that our model is going to predict.

You select the price column in the column selector by moving it from the Available columns list to the

Selected columns list.

Select the price column for the "Train Model" module

7. Run the experiment.

We now have a trained regression model that can be used to score new automobile data to make price

predictions.

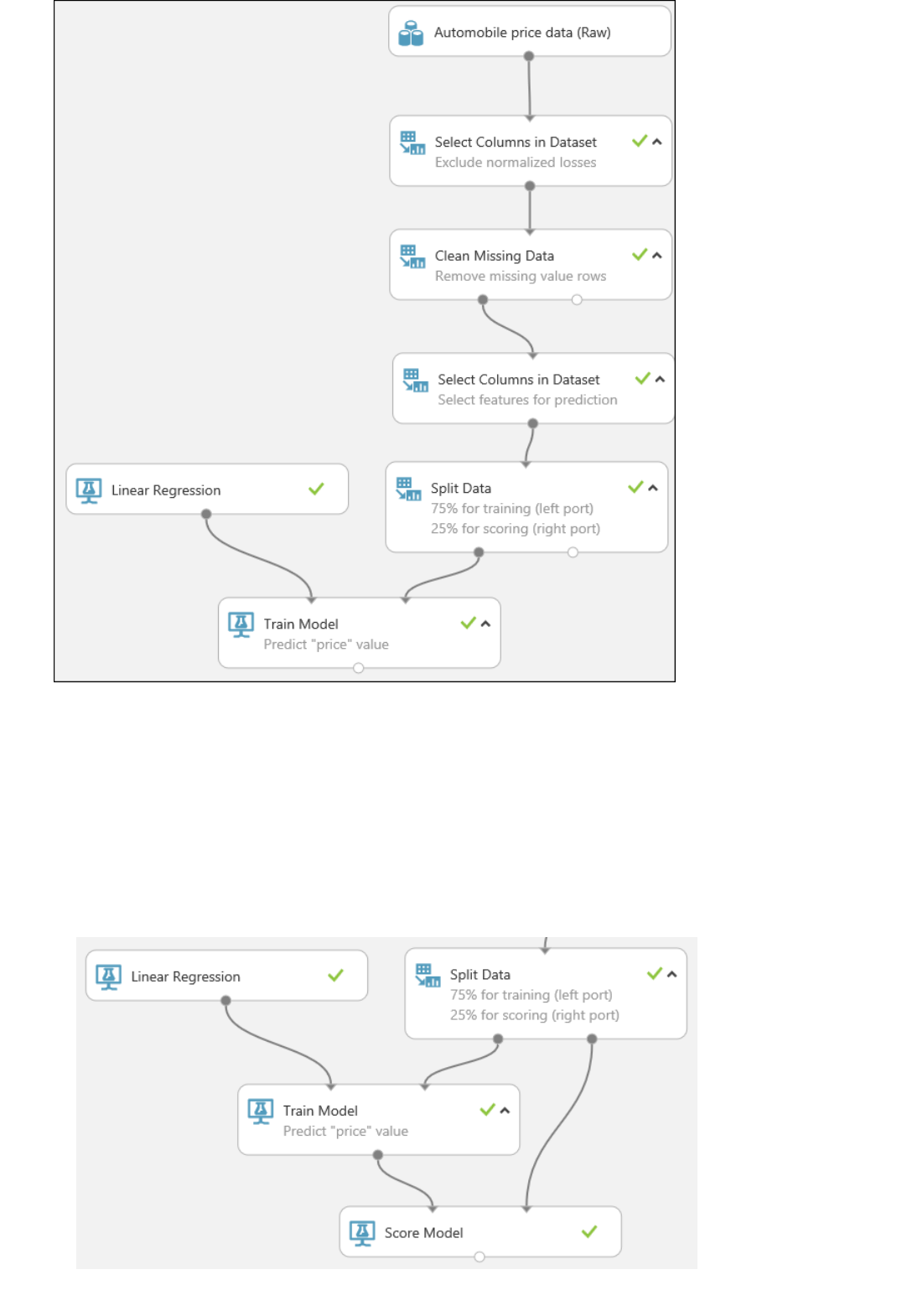

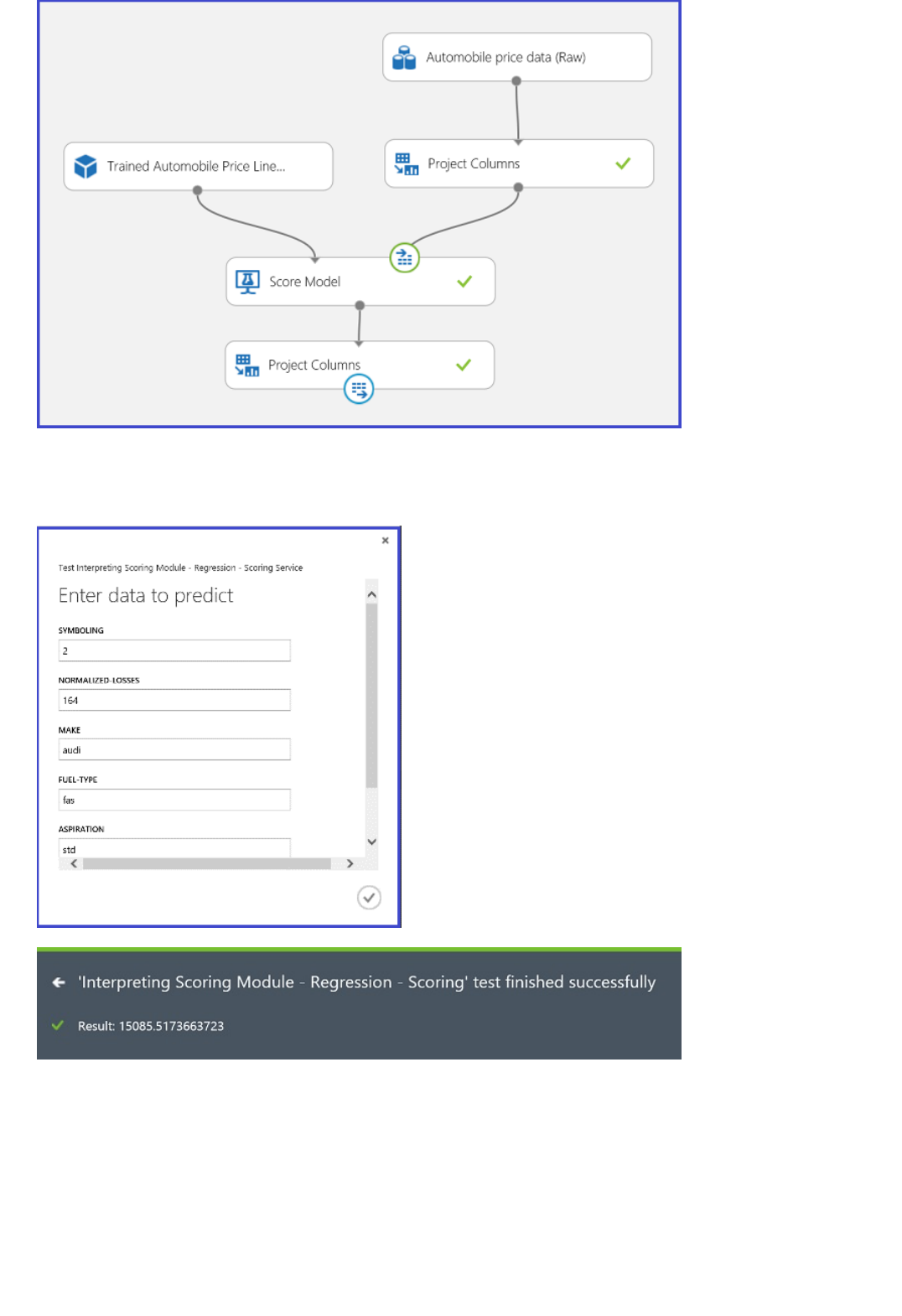

Step 5: Predict new automobile prices

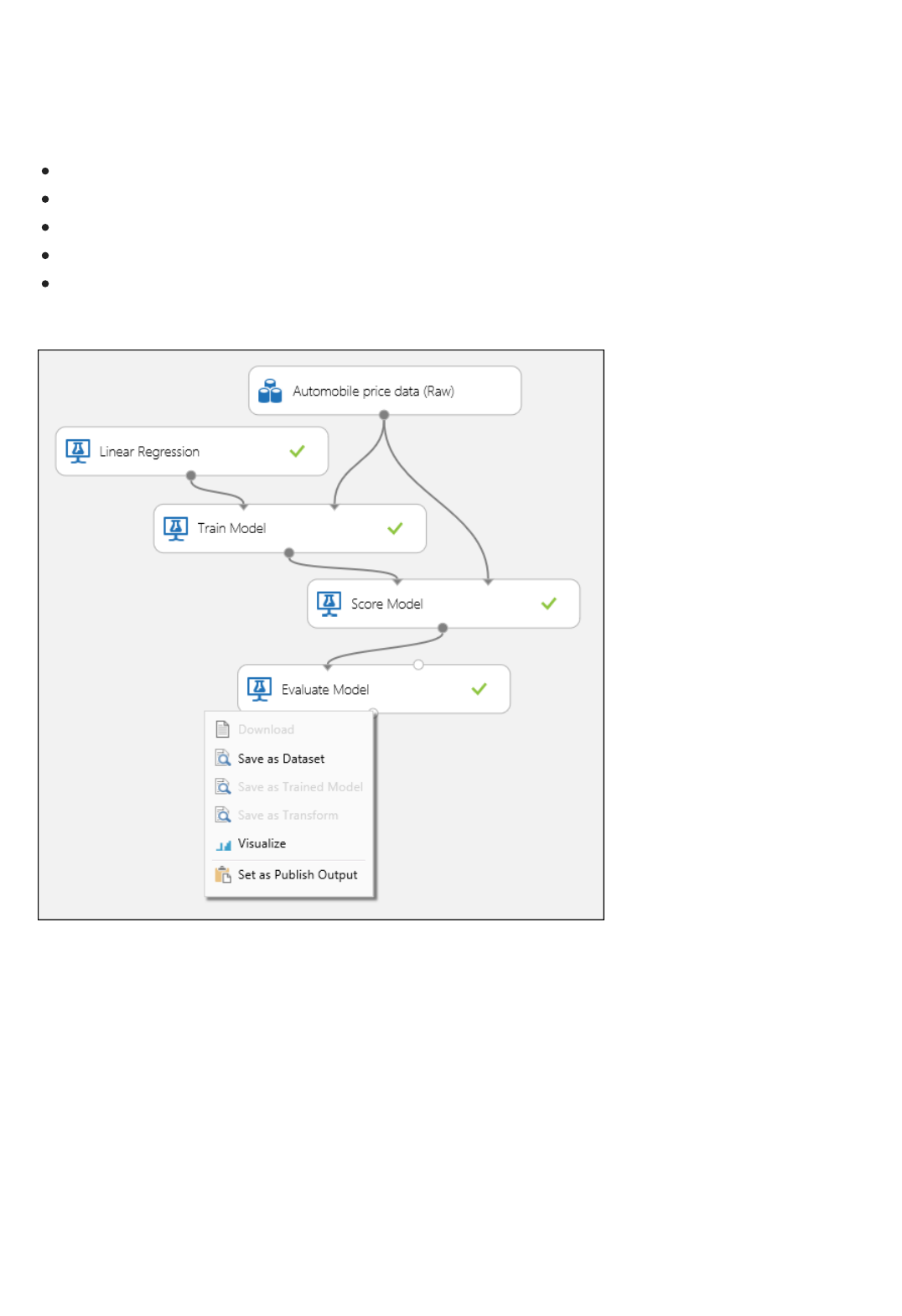

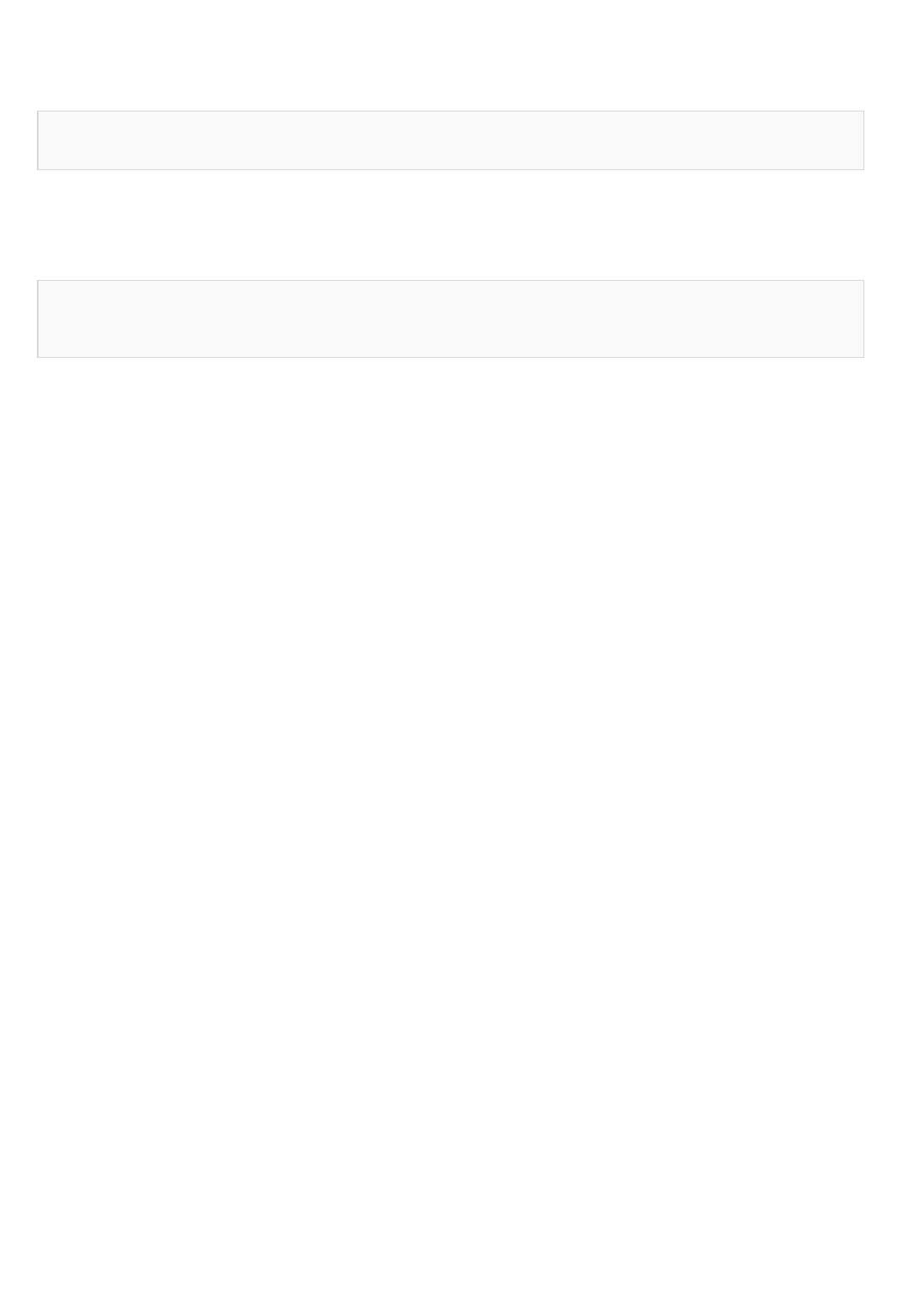

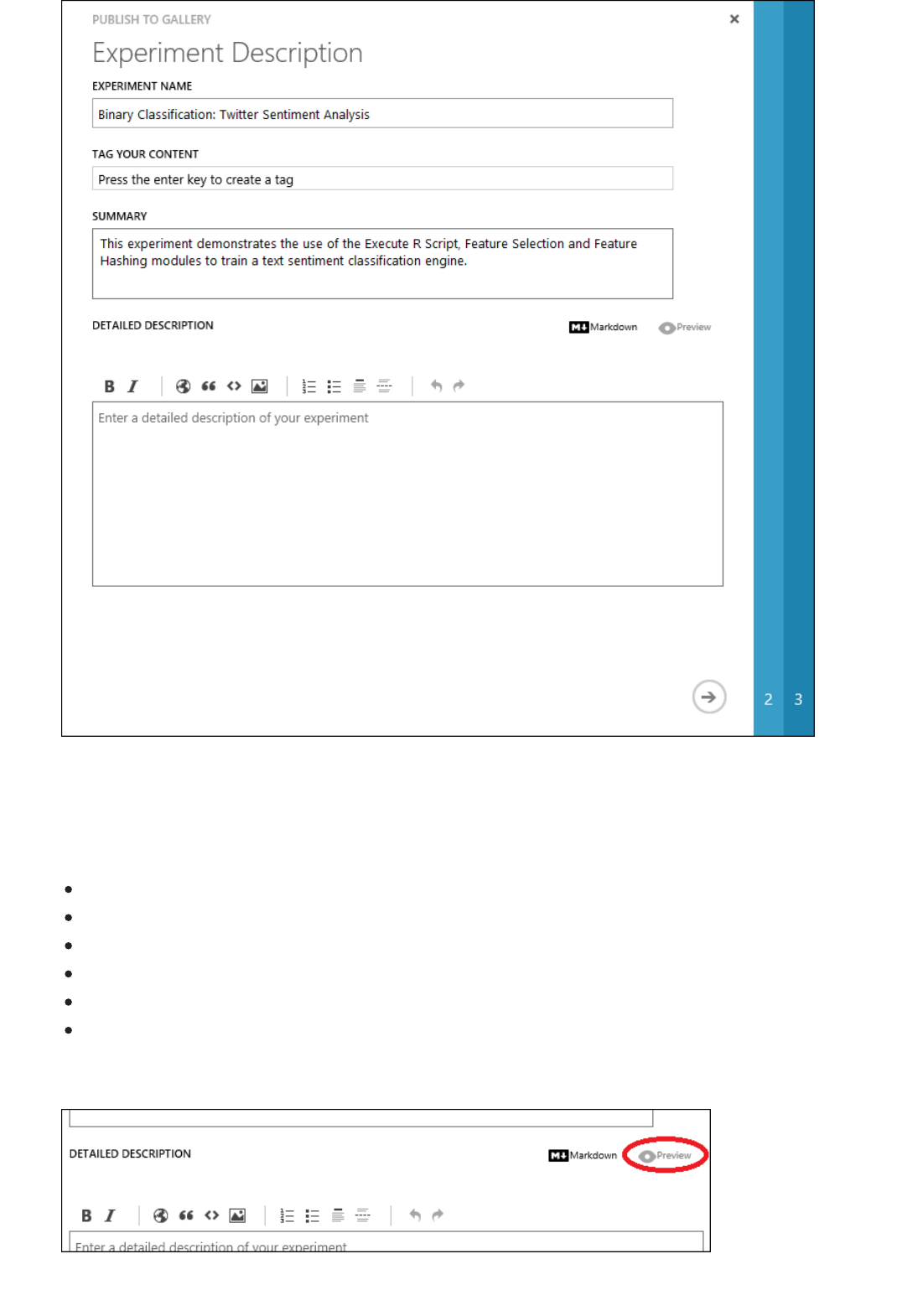

After running, the experiment should now look something like this

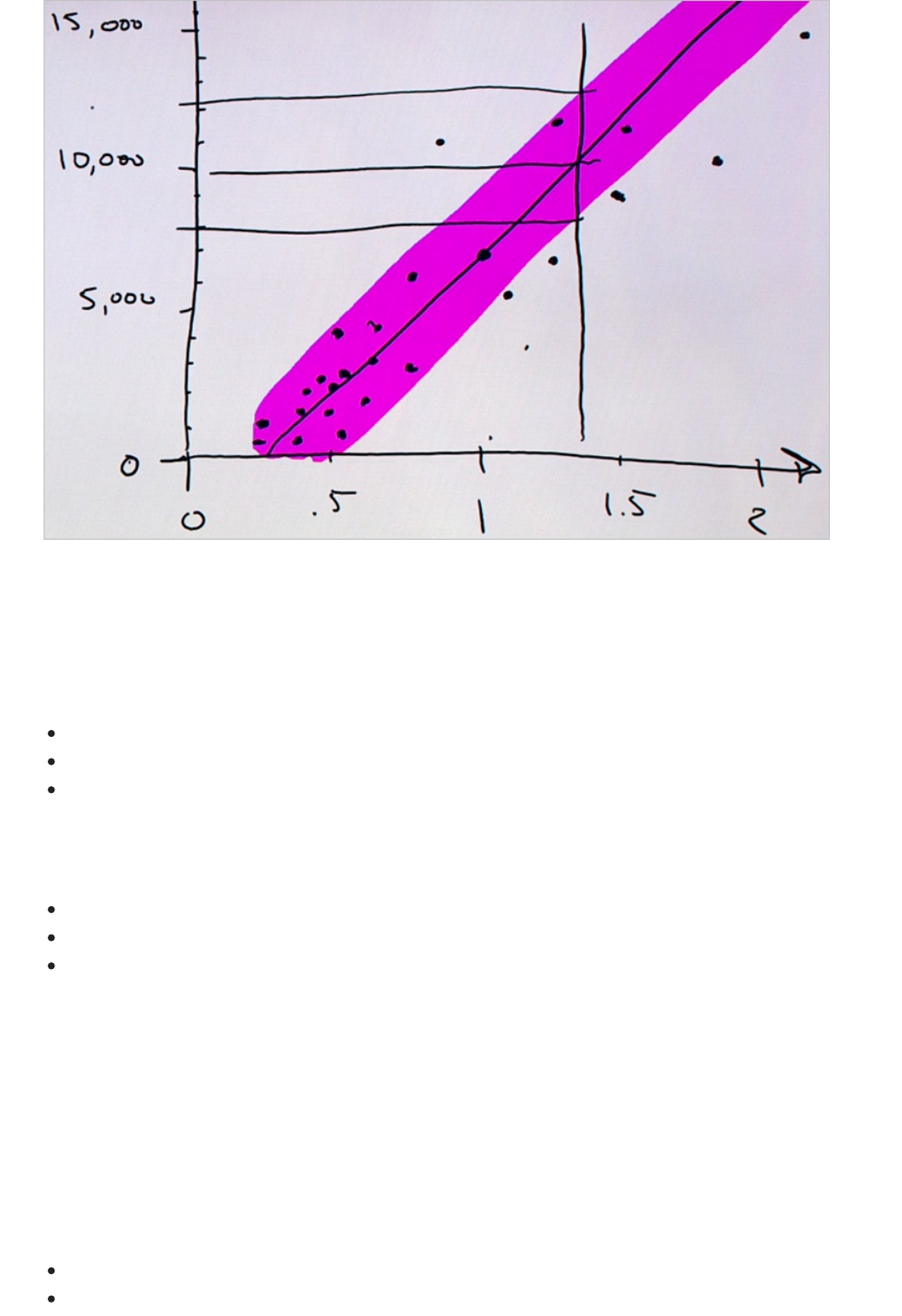

Now that we've trained the model using 75 percent of our data, we can use it to score the other 25 percent of the

data to see how well our model functions.

1. Find and drag the Score Model module to the experiment canvas. Connect the output of the Train Model

module to the left input port of Score Model. Connect the test data output (right port) of the Split Data

module to the right input port of Score Model.

Connect the "Score Model" module to both the "Train Model" and "Split Data" modules

TIPTIP

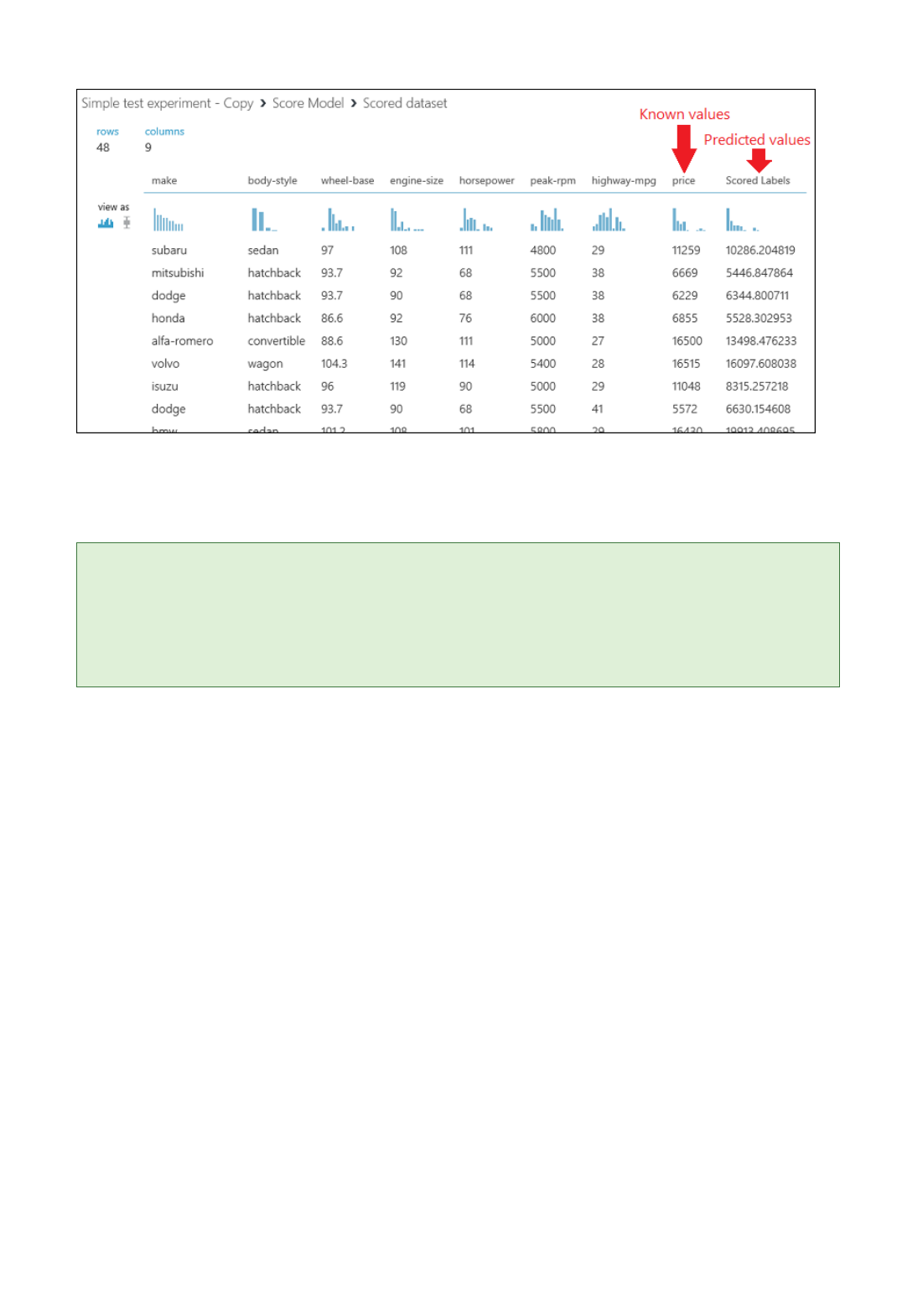

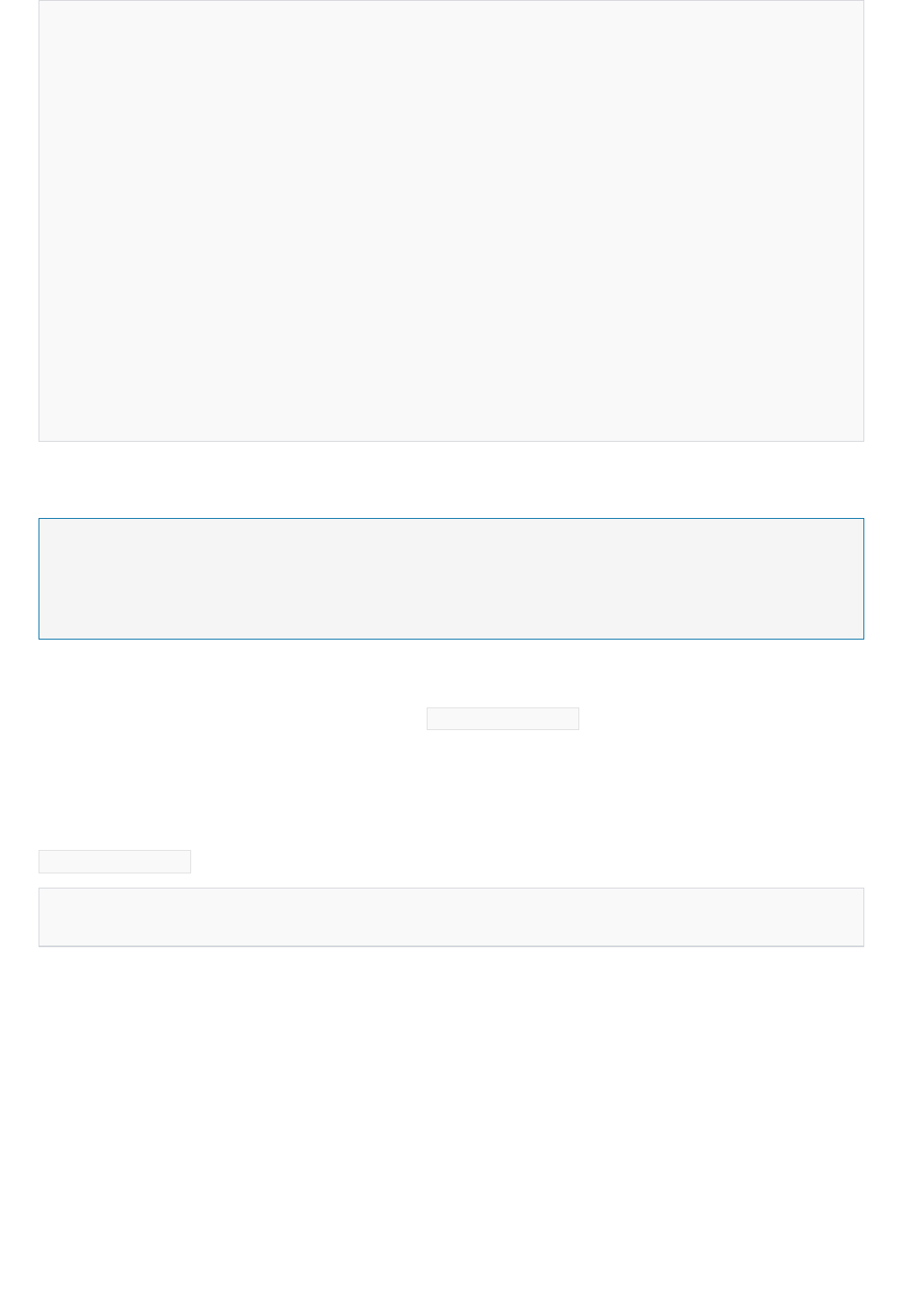

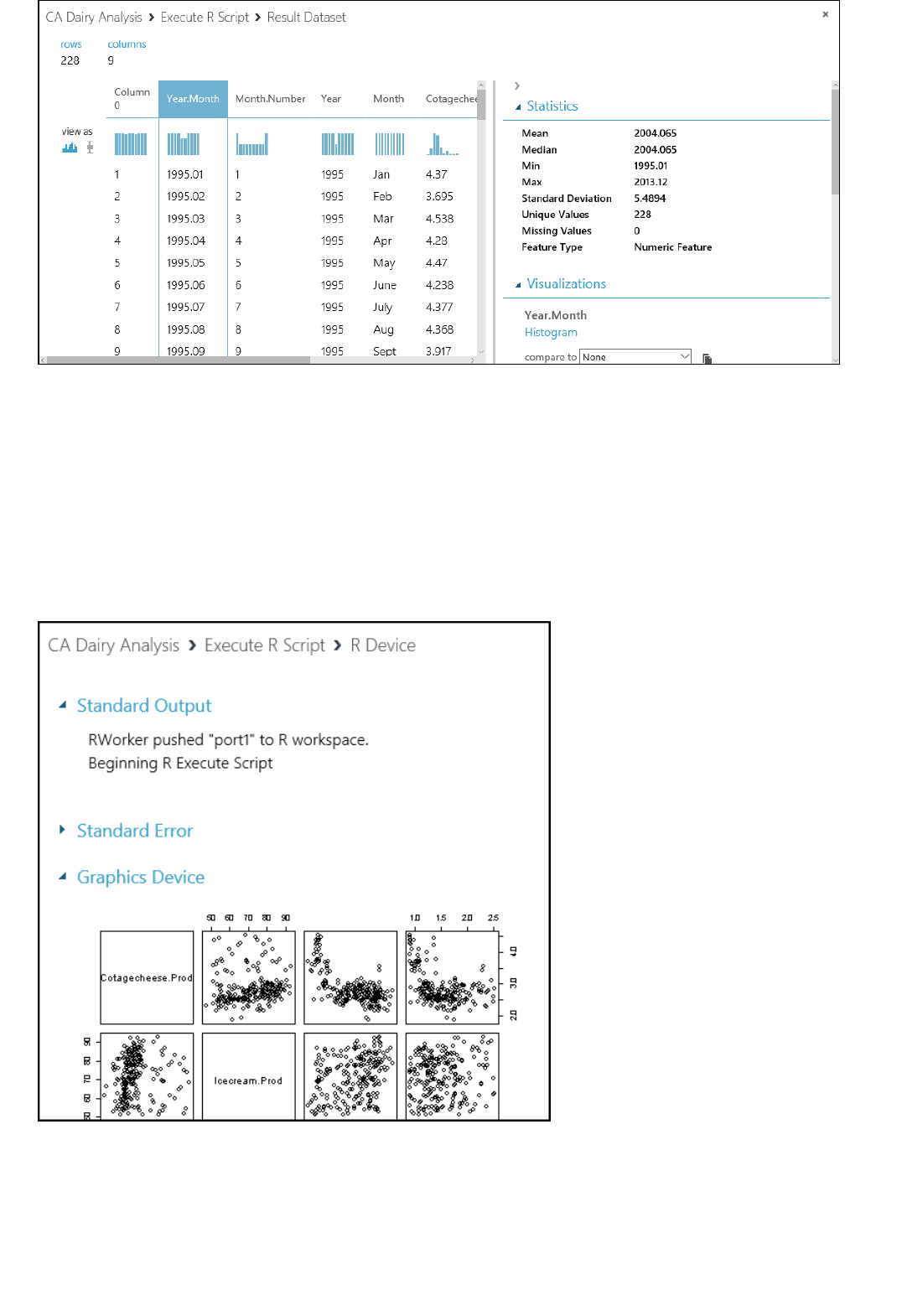

2. Run the experiment and view the output from the Score Model module (click the output port of Score

Model and select Visualize). The output shows the predicted values for price and the known values from

the test data.

Output of the "Score Model" module

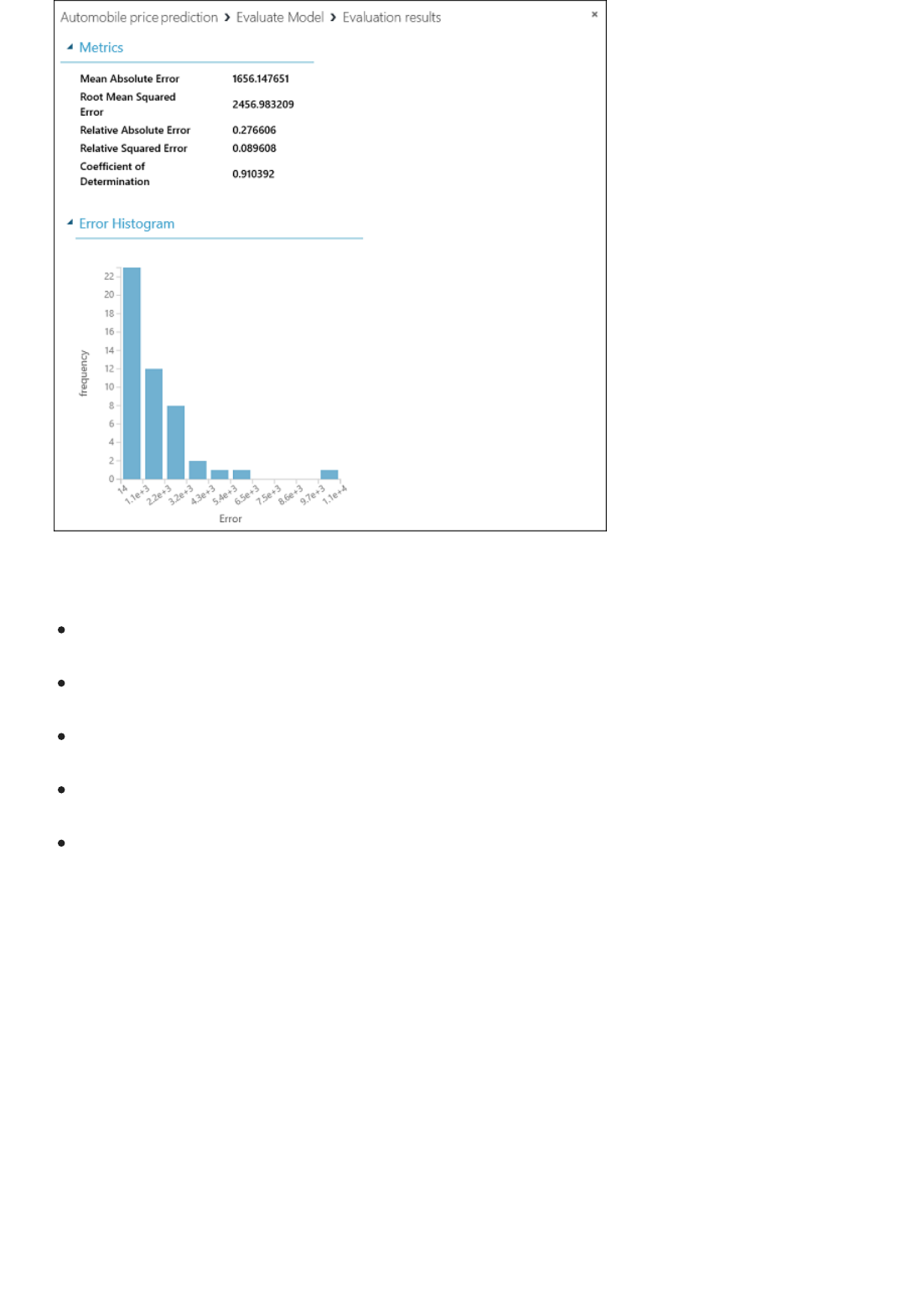

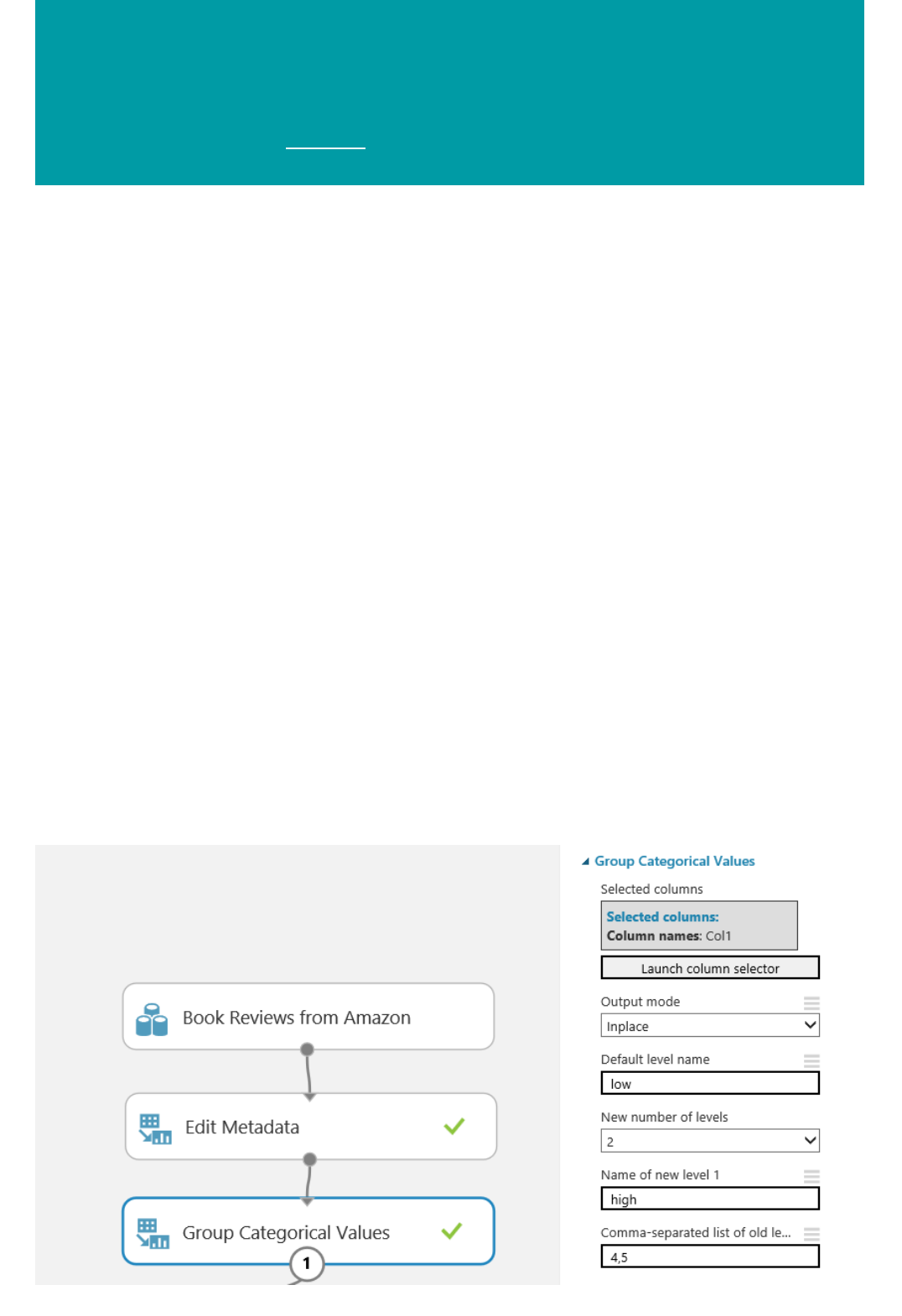

3. Finally, we test the quality of the results. Select and drag the Evaluate Model module to the experiment

canvas, and connect the output of the Score Model module to the left input of Evaluate Model.

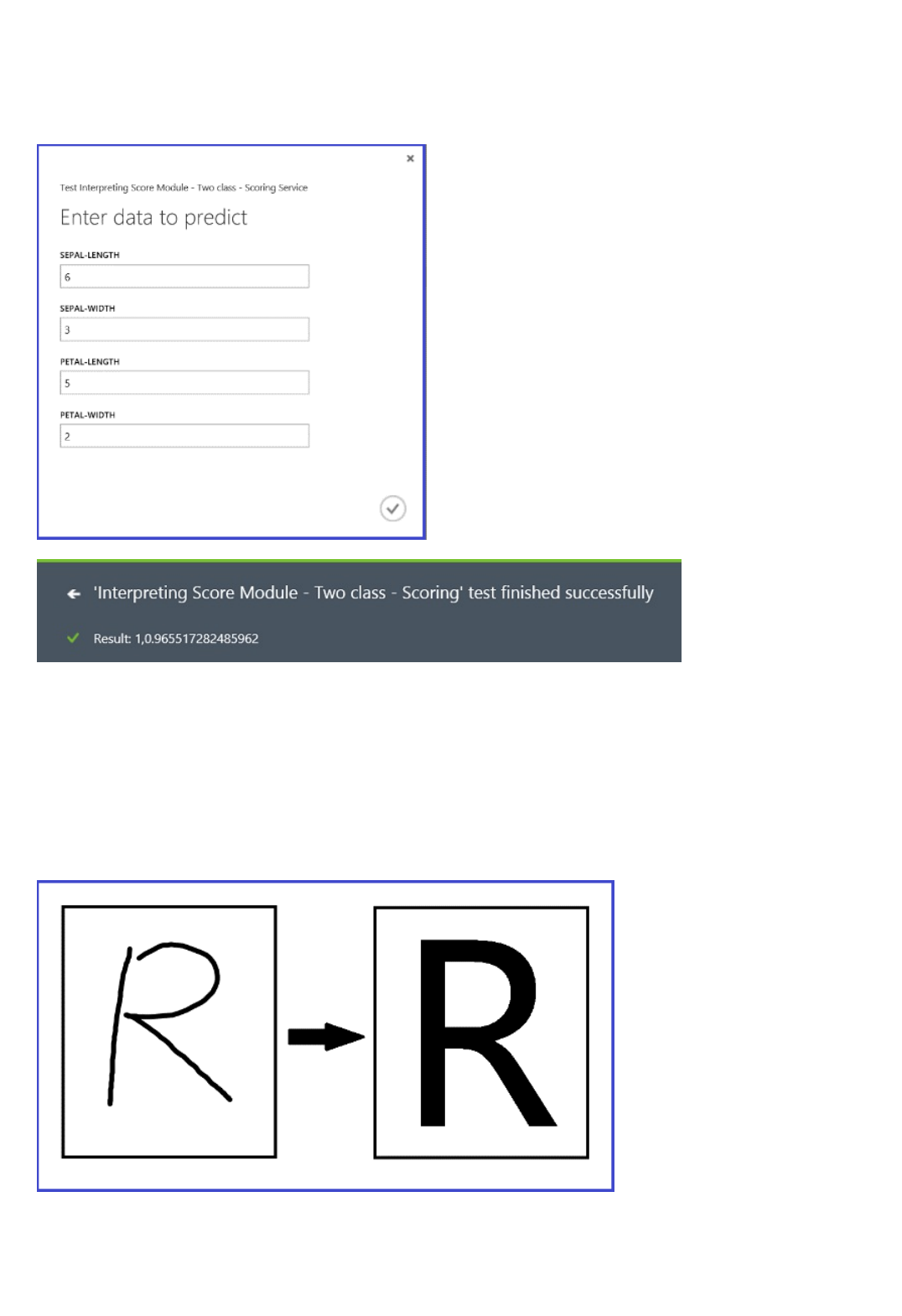

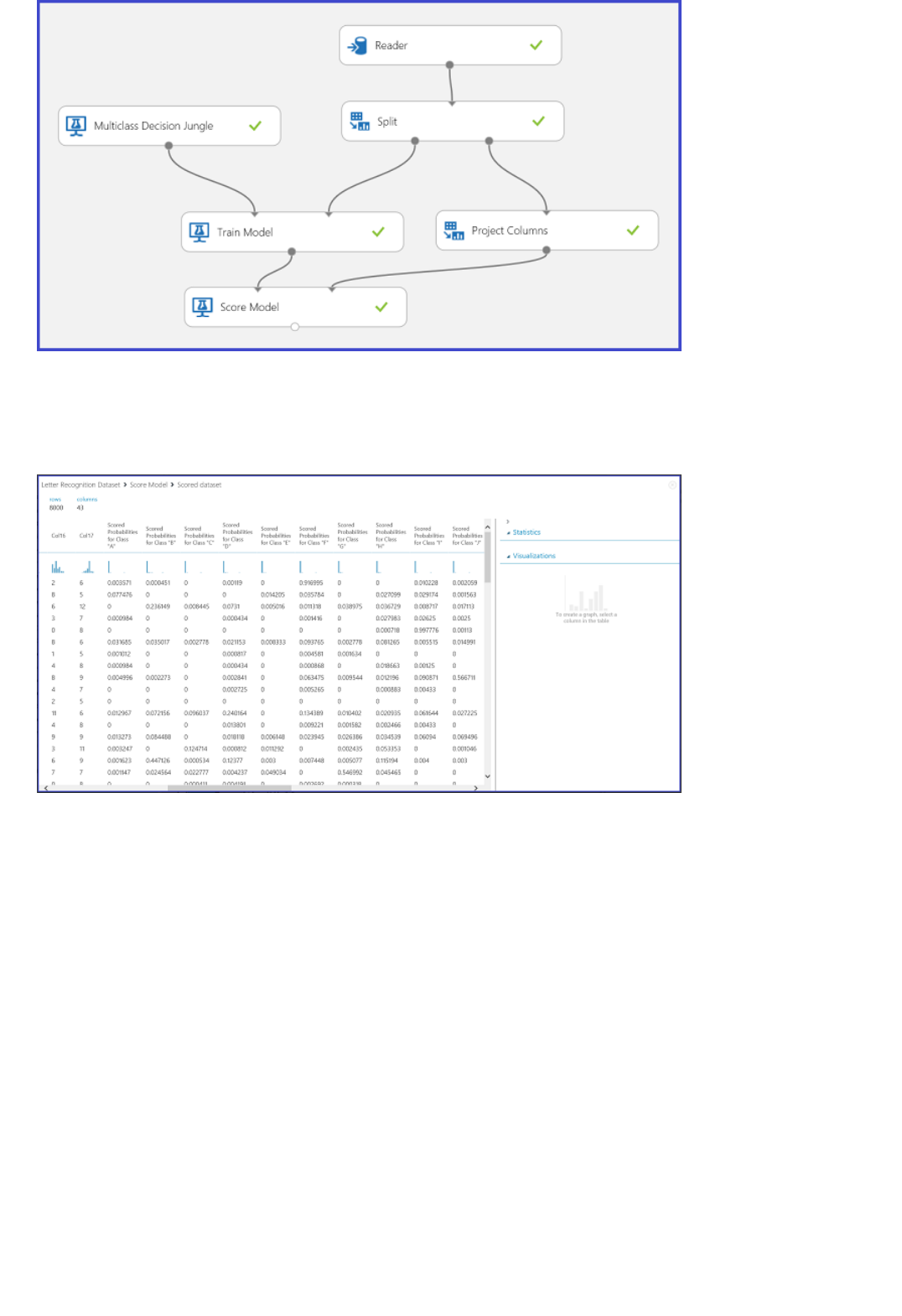

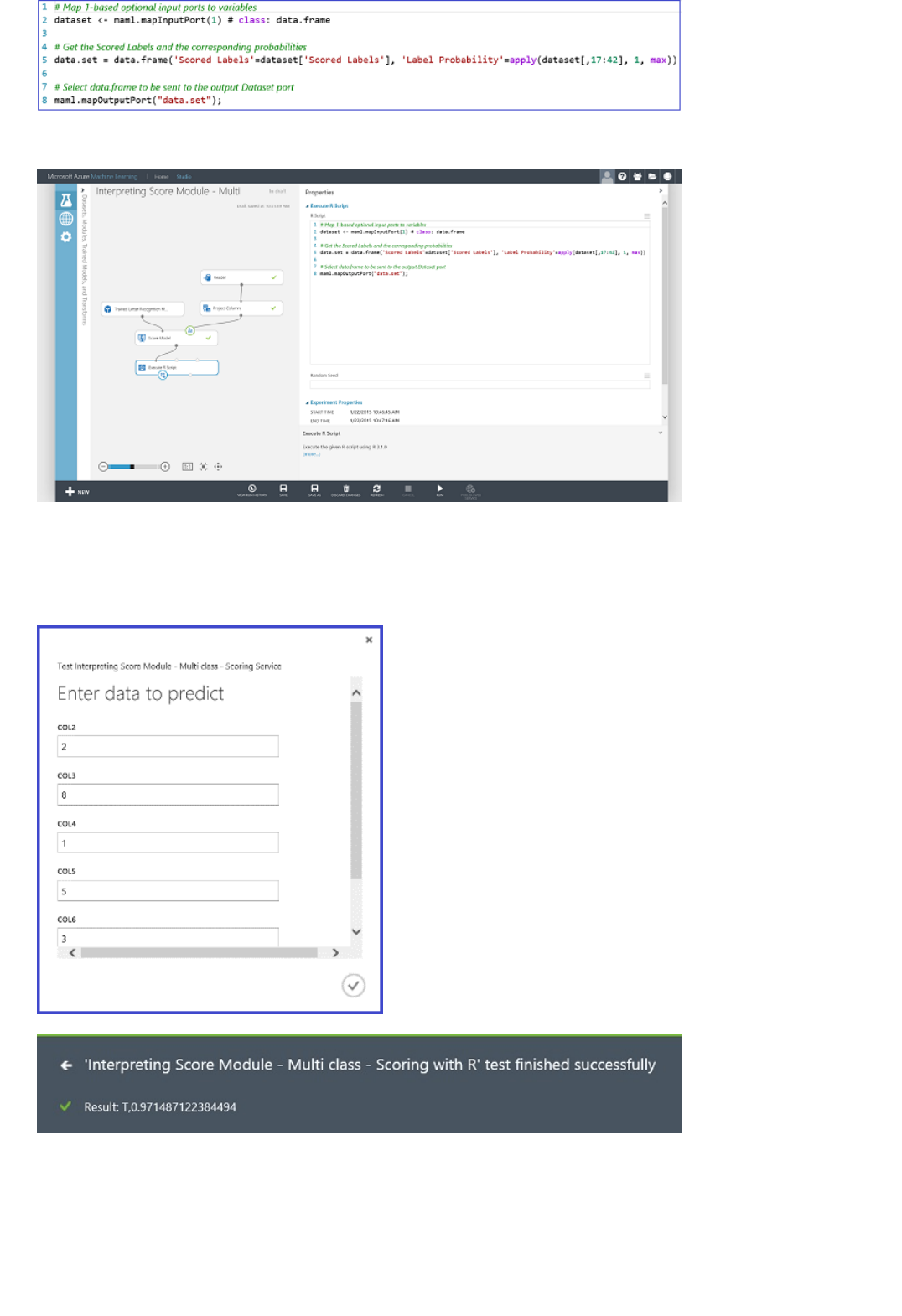

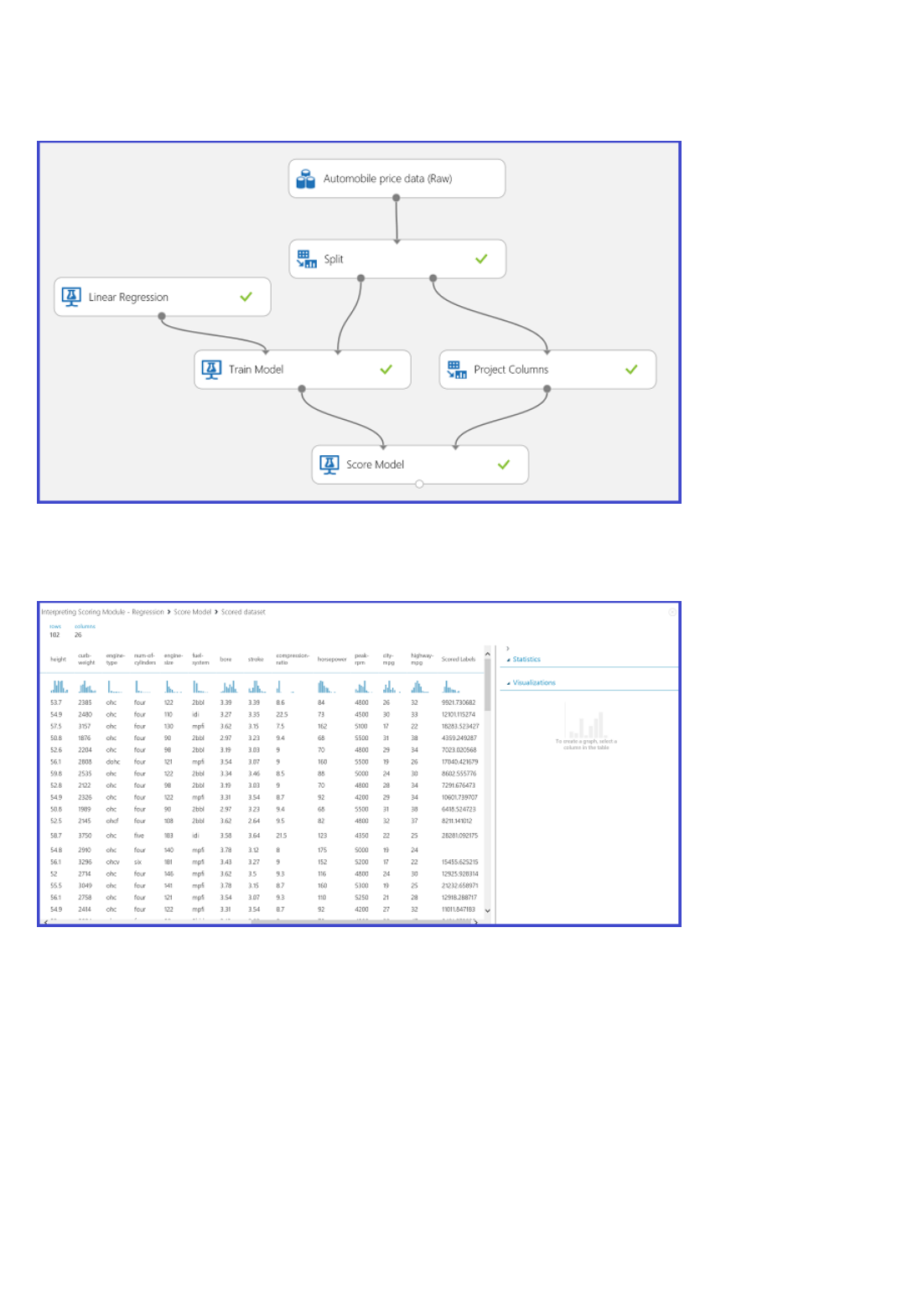

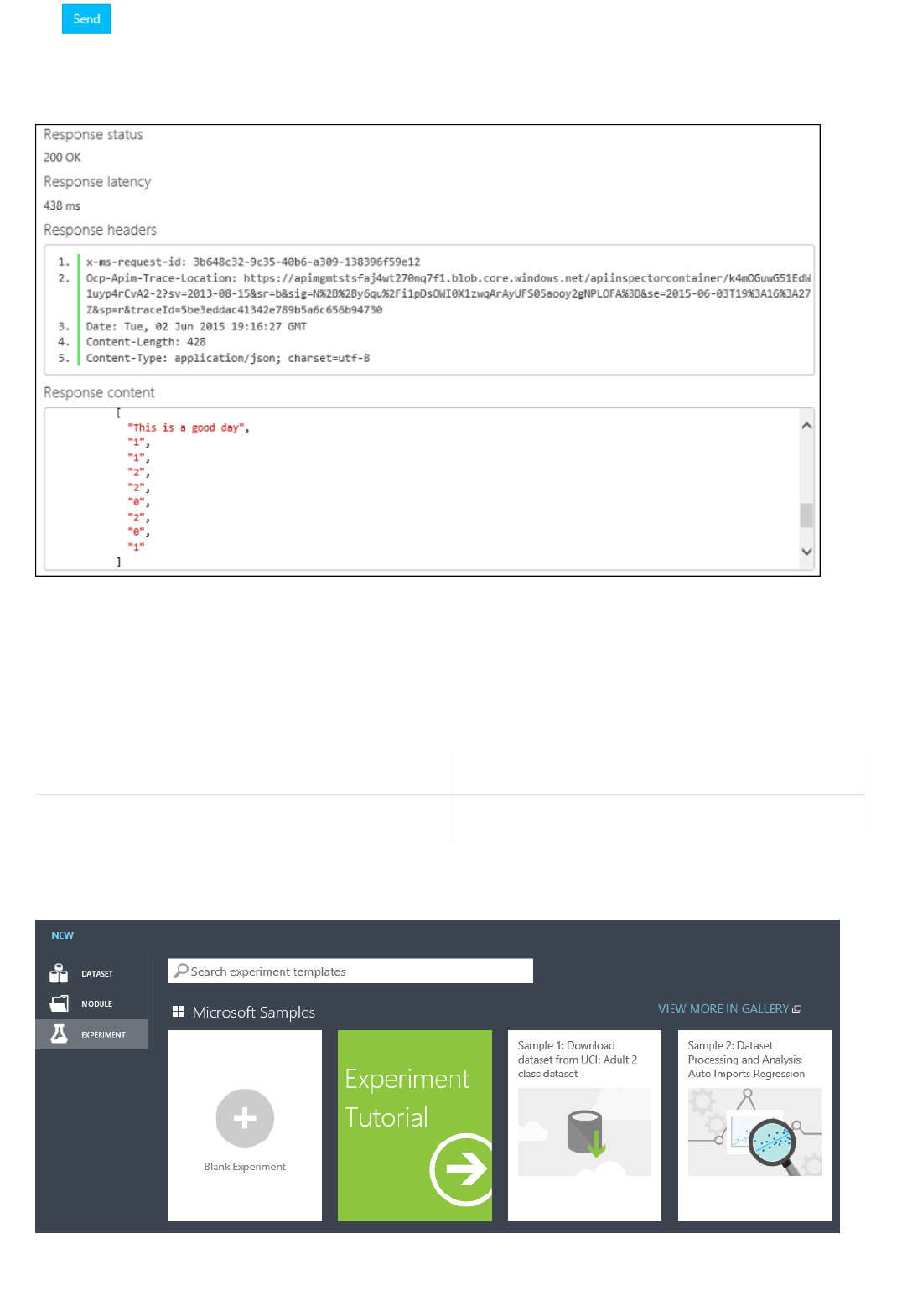

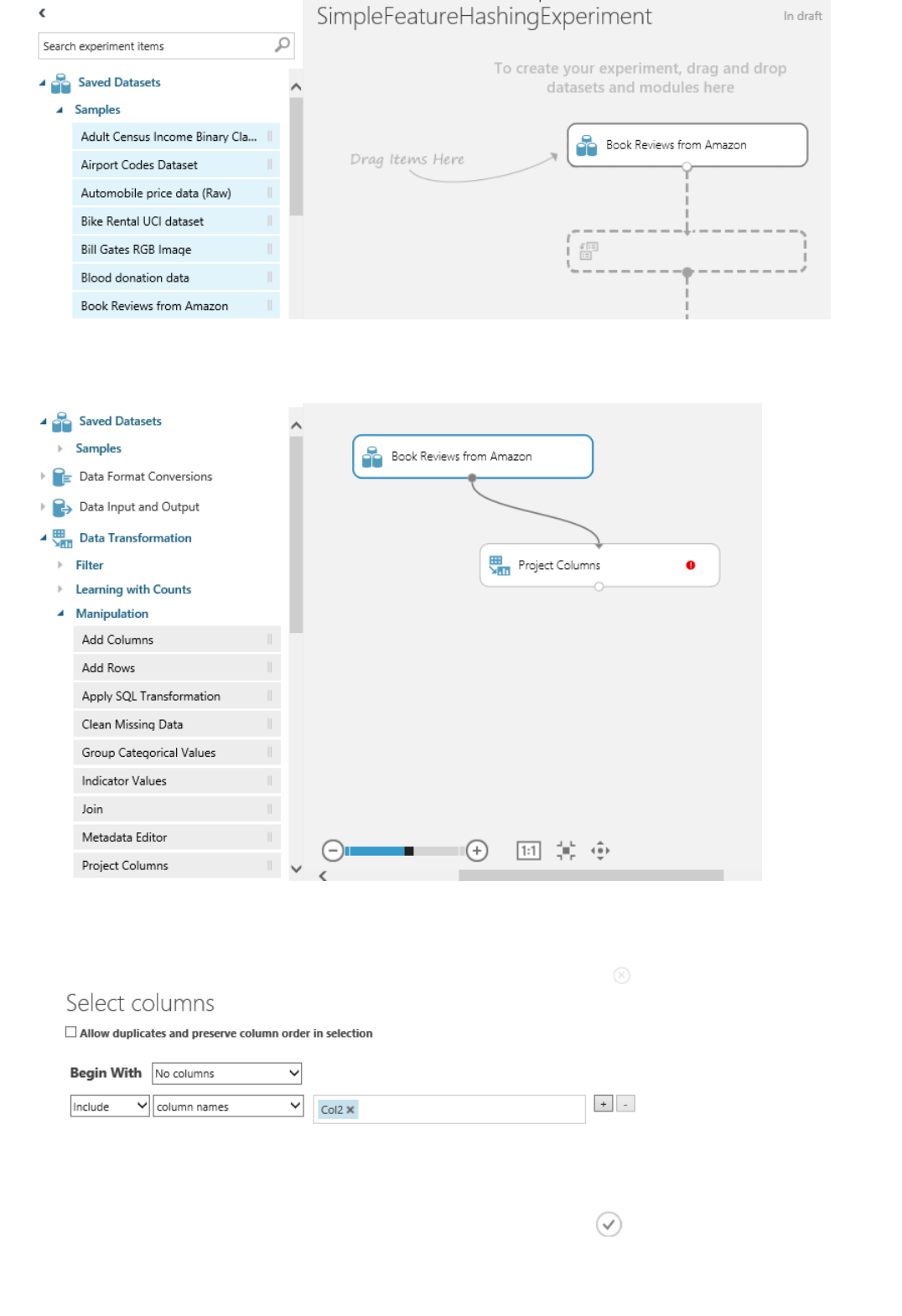

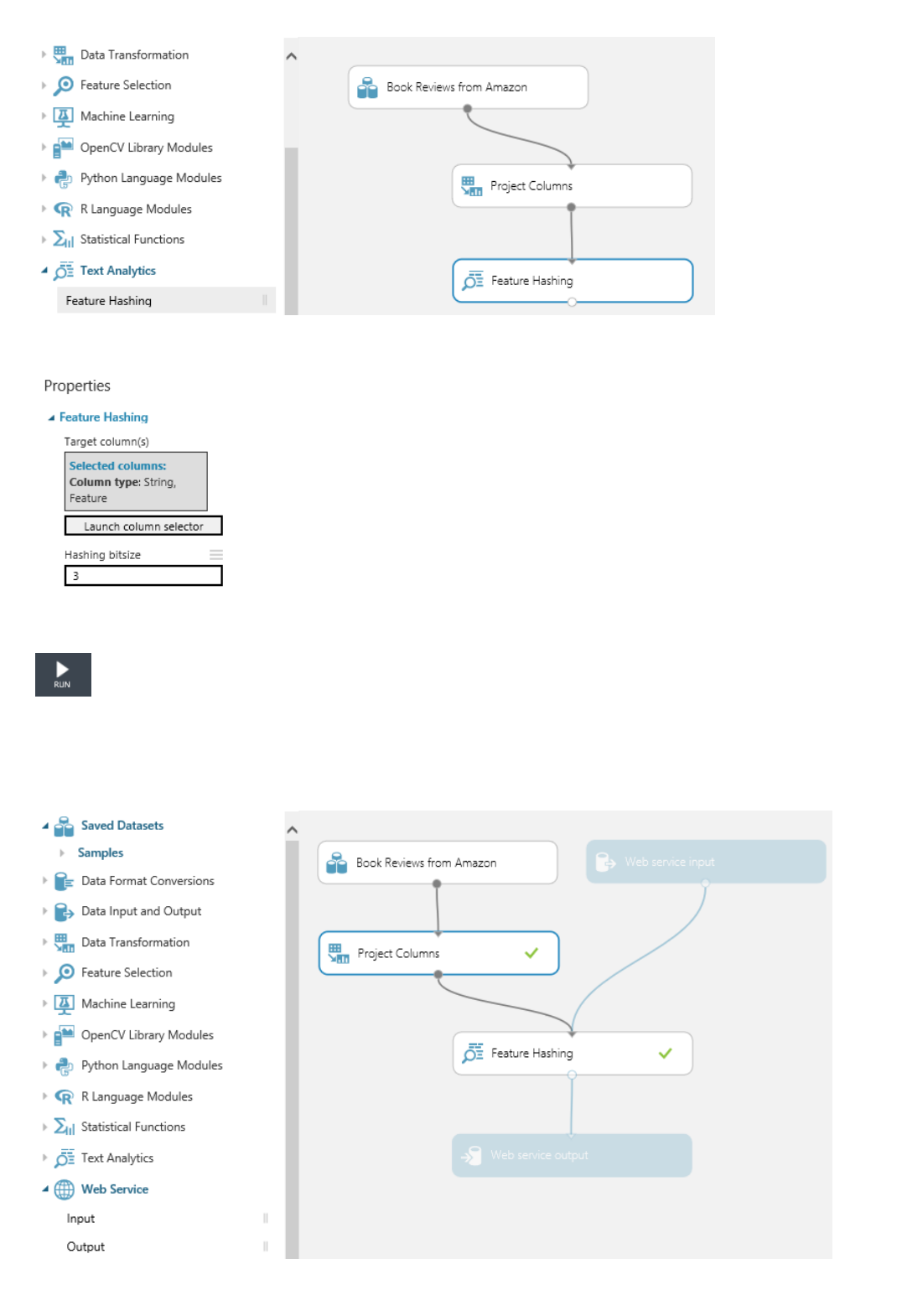

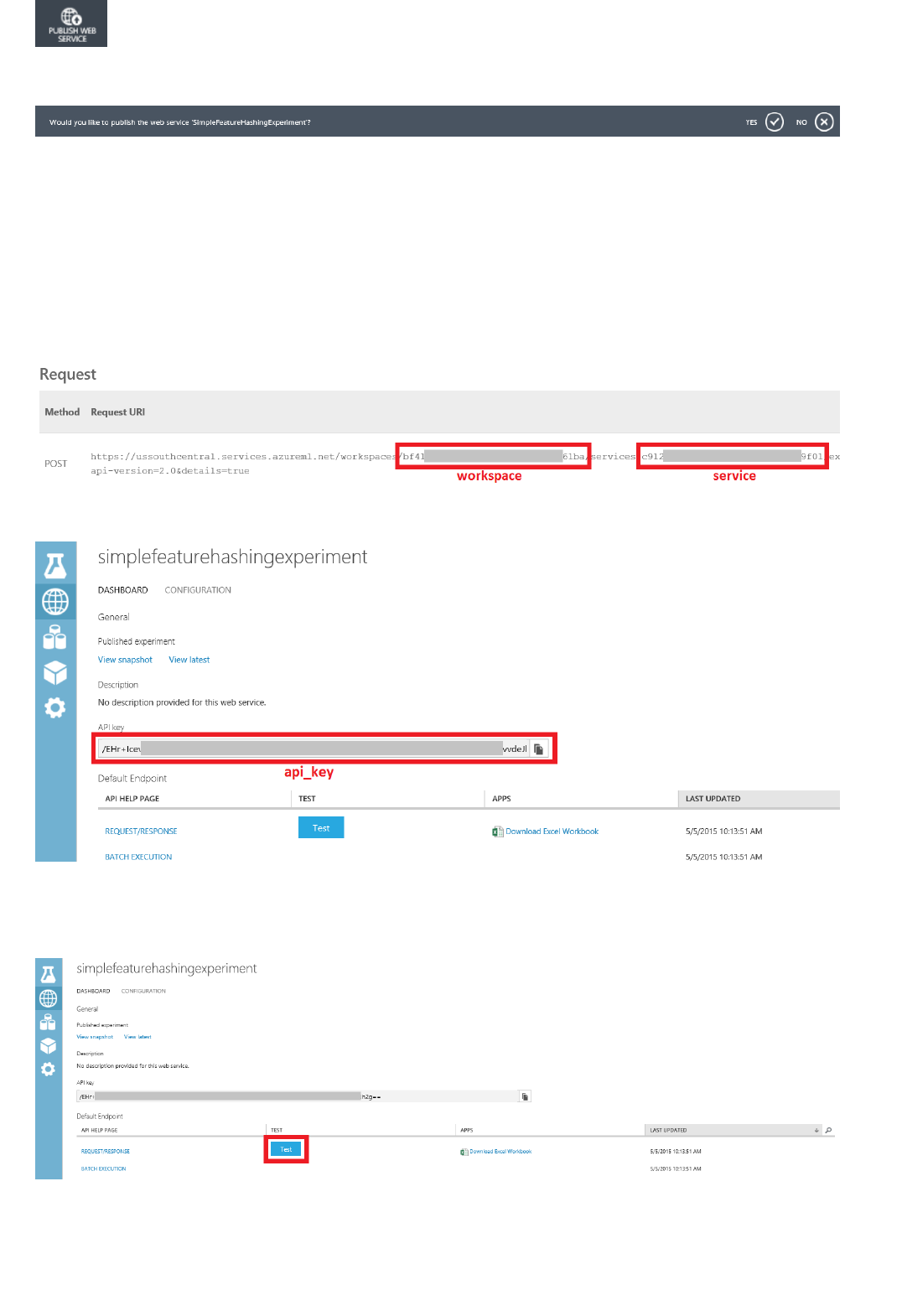

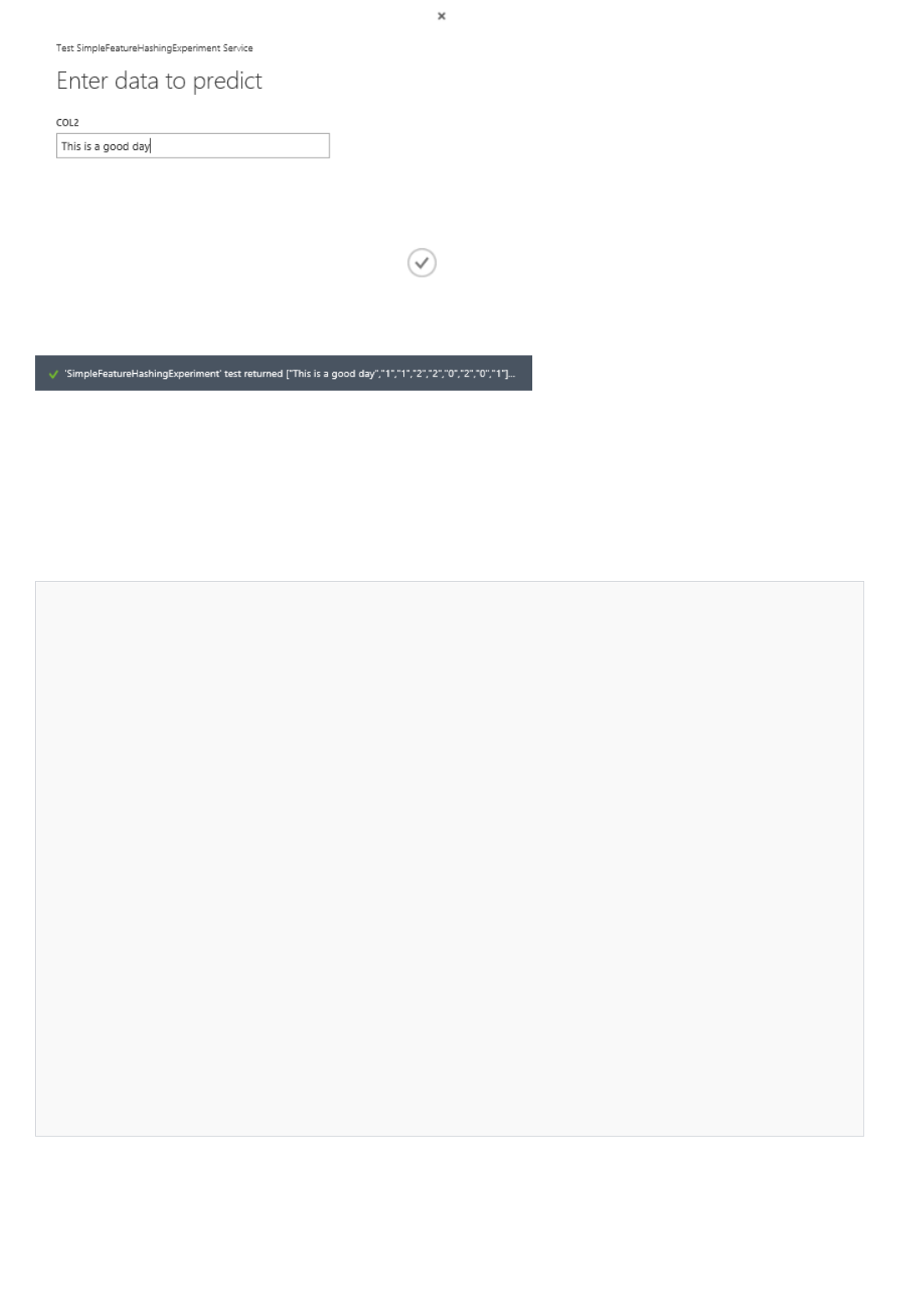

There are two input ports on the Evaluate Model module because it can be used to compare two models side by