Mongo DB Admin Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 220 [warning: Documents this large are best viewed by clicking the View PDF Link!]

MongoDB Administrator Training

MongoDB Administrator Training

Release 3.4

MongoDB, Inc.

Nov 15, 2017

Contents

1Introduction 4

1.1 Warm Up ................................................. 4

1.2 MongoDB - The Company ....................................... 5

1.3 MongoDB Overview ........................................... 5

1.4 MongoDB Stores Documents ..................................... 8

1.5 MongoDB Data Types ......................................... 11

1.6 Lab: Installing and Configuring MongoDB .............................. 14

2Storage 18

2.1 Introduction to Storage Engines .................................... 18

3CRUD 24

3.1 Creating and Deleting Documents .................................. 24

3.2 Reading Documents .......................................... 29

3.3 Query Operators ............................................ 36

3.4 Lab: Finding Documents ........................................ 40

3.5 Updating Documents .......................................... 40

3.6 Lab: Updating Documents ....................................... 49

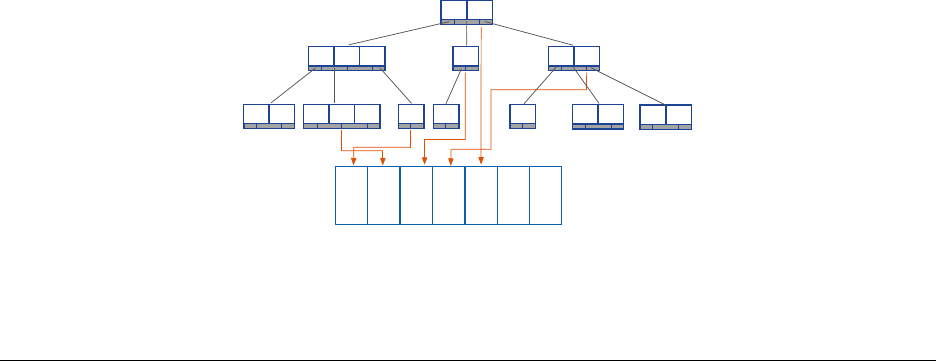

4Indexes 50

4.1 Index Fundamentals .......................................... 50

4.2 Lab: Basic Indexes ........................................... 56

4.3 Compound Indexes ........................................... 57

4.4 Lab: Optimizing an Index ........................................ 62

4.5 Multikey Indexes ............................................. 63

4.6 Hashed Indexes ............................................. 67

4.7 Geospatial Indexes ........................................... 68

4.8 Using Compass with Indexes ..................................... 75

4.9 TTL Indexes ............................................... 79

4.10 Text Indexes ............................................... 81

4.11 Partial Indexes .............................................. 83

4.12 Lab: Finding and Addressing Slow Operations ........................... 86

4.13 Lab: Using explain() ........................................ 87

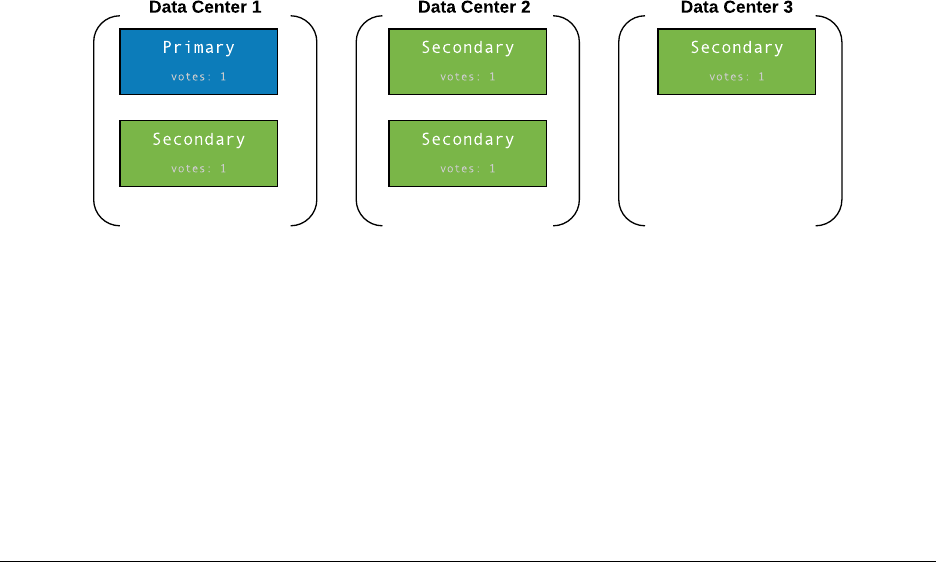

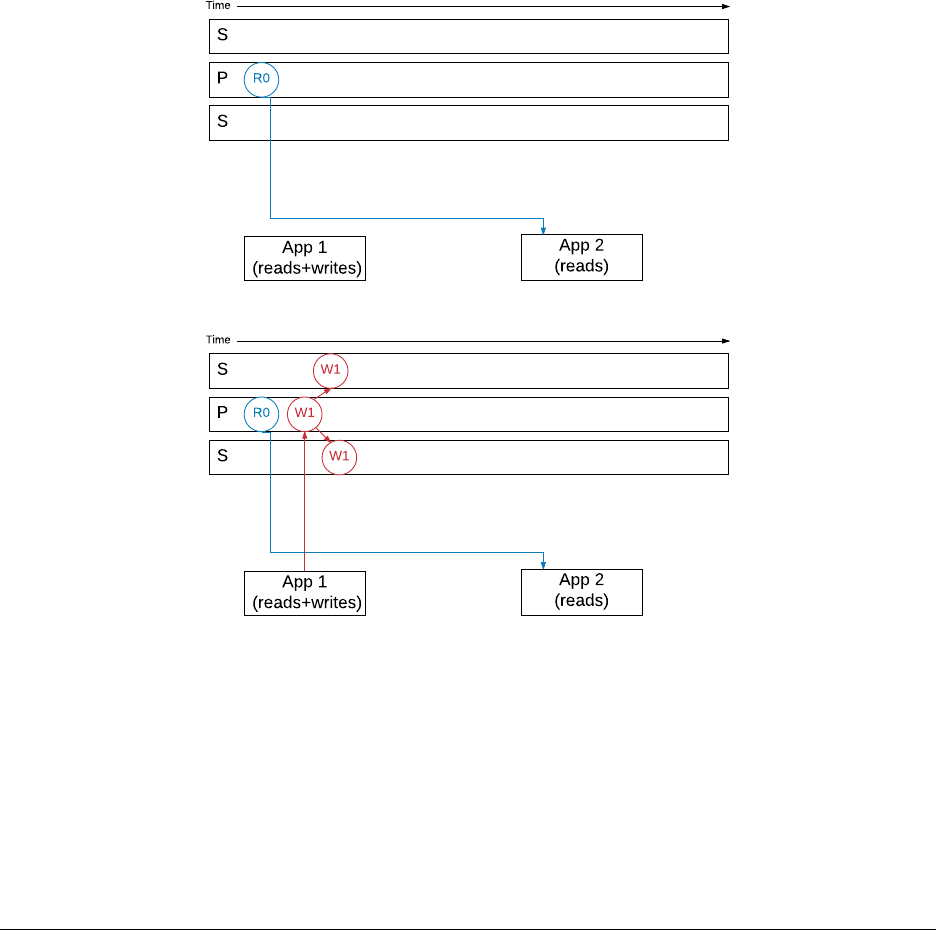

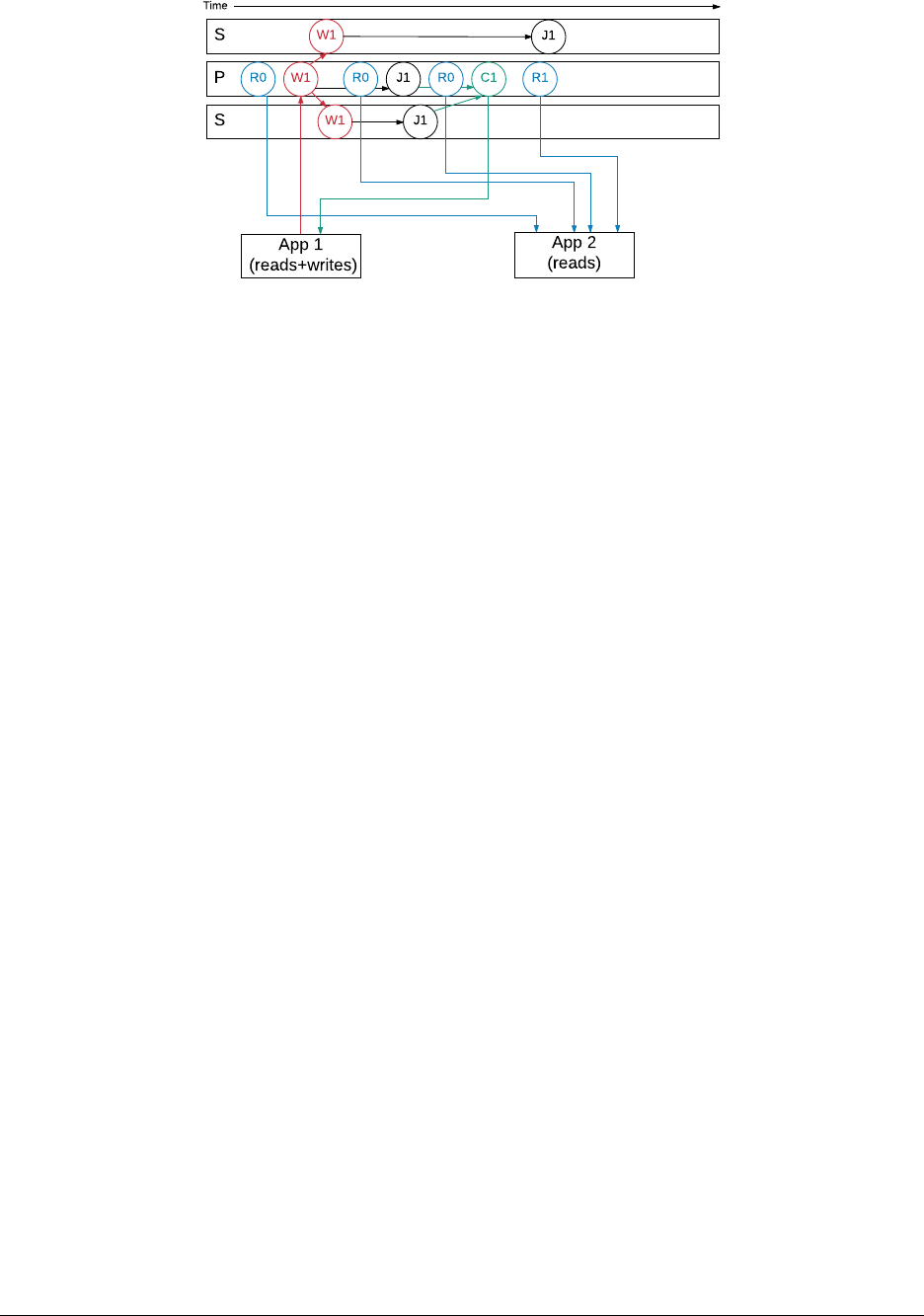

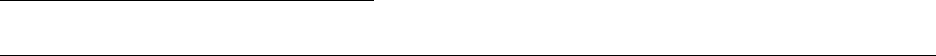

5ReplicaSets 88

5.1 Introduction to Replica Sets ...................................... 88

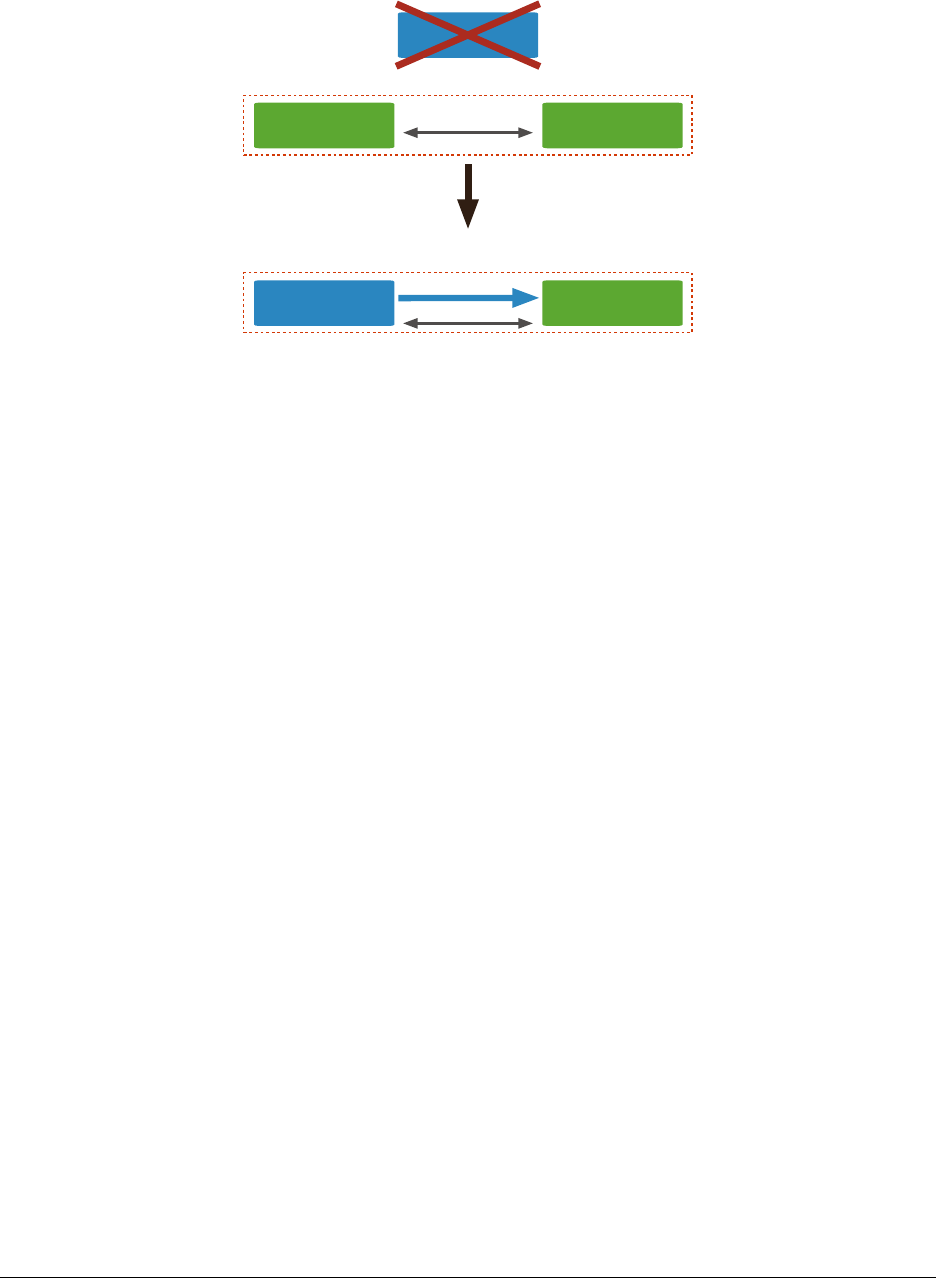

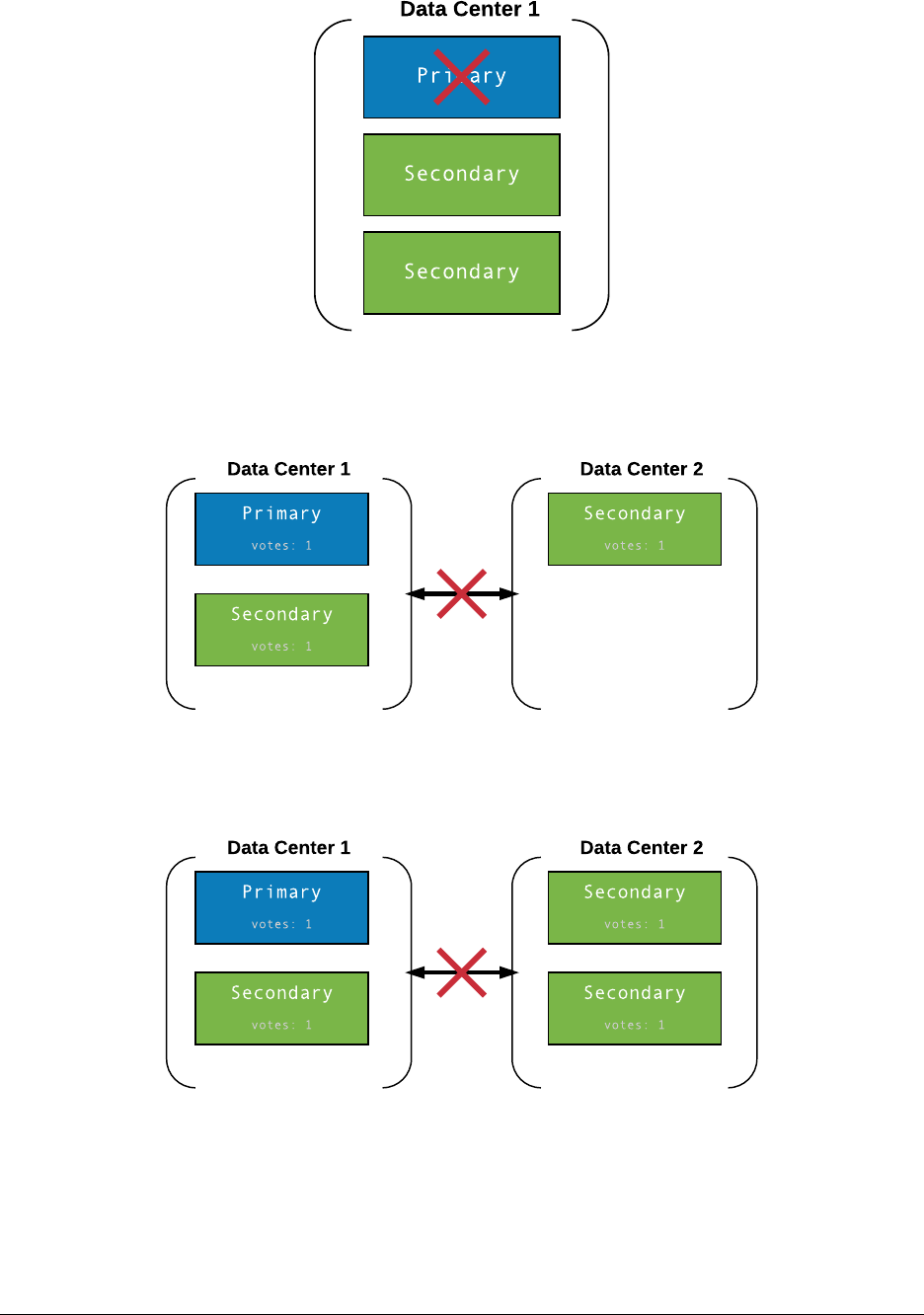

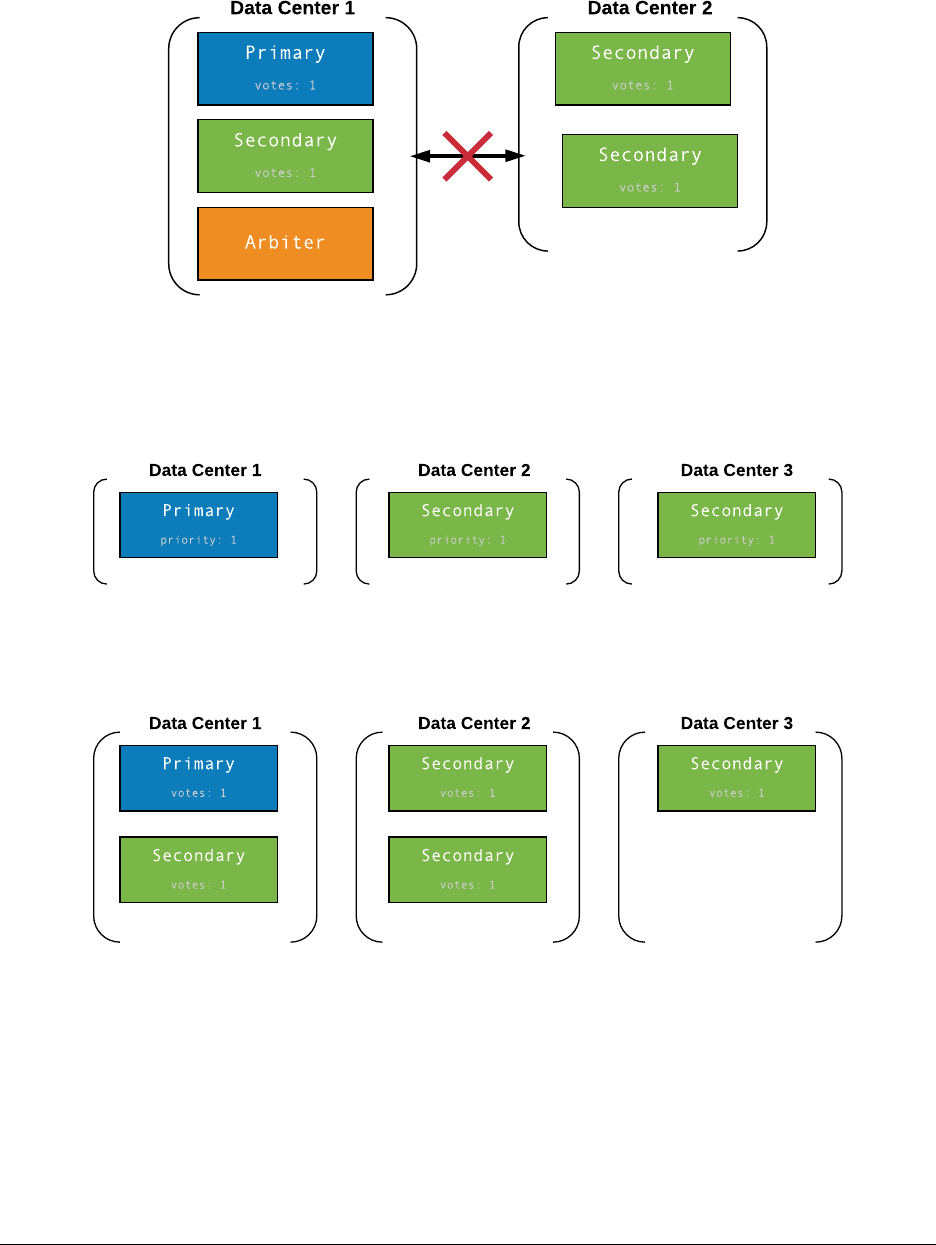

5.2 Elections in Replica Sets ........................................ 91

5.3 Replica Set Roles and Configuration ................................. 96

5.4 The Oplog: Statement Based Replication .............................. 97

1

5.5 Lab: Working with the Oplog ...................................... 99

5.6 Write Concern ..............................................101

5.7 Read Concern ..............................................106

5.8 Read Preference ............................................113

5.9 Lab: Setting up a Replica Set .....................................114

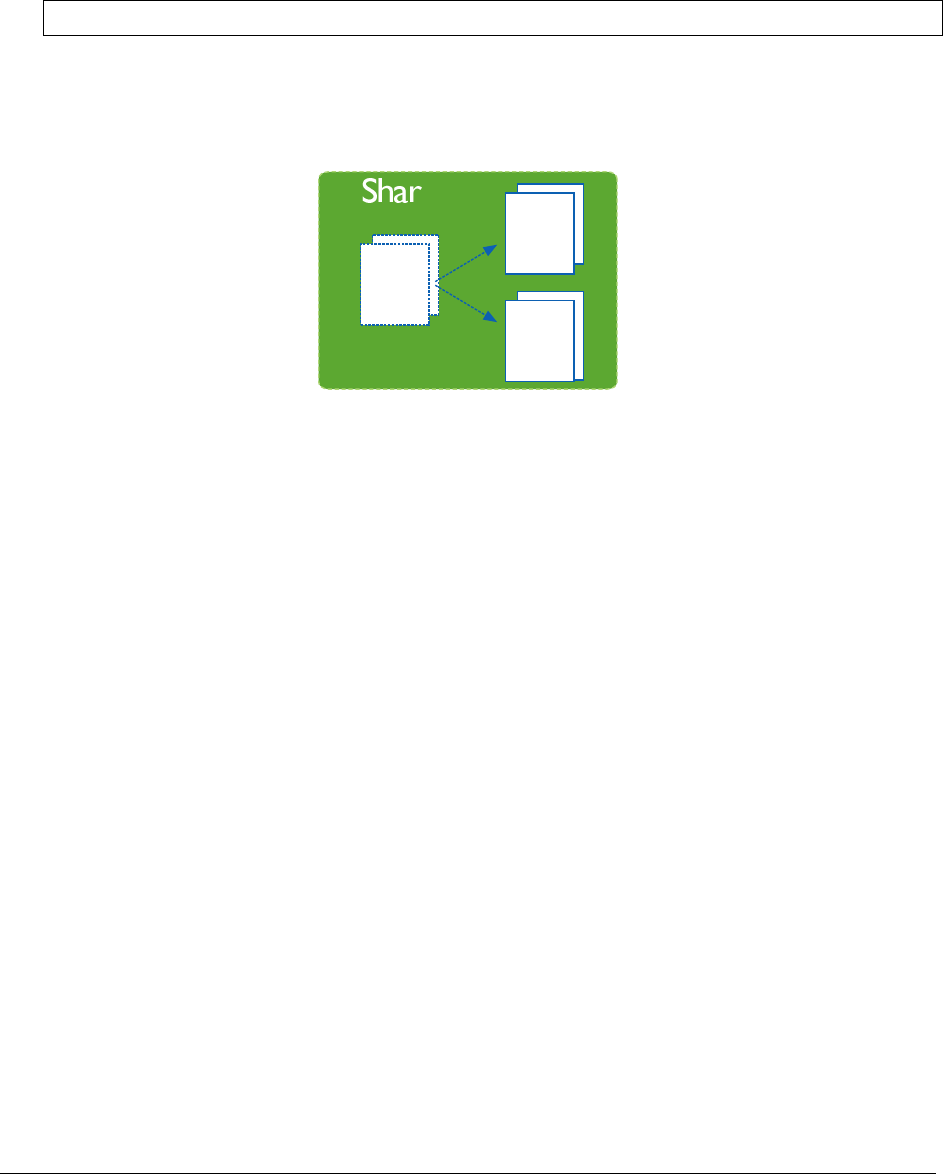

6Sharding 118

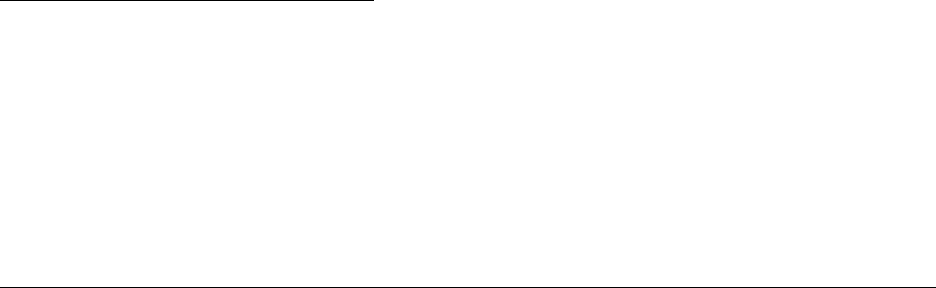

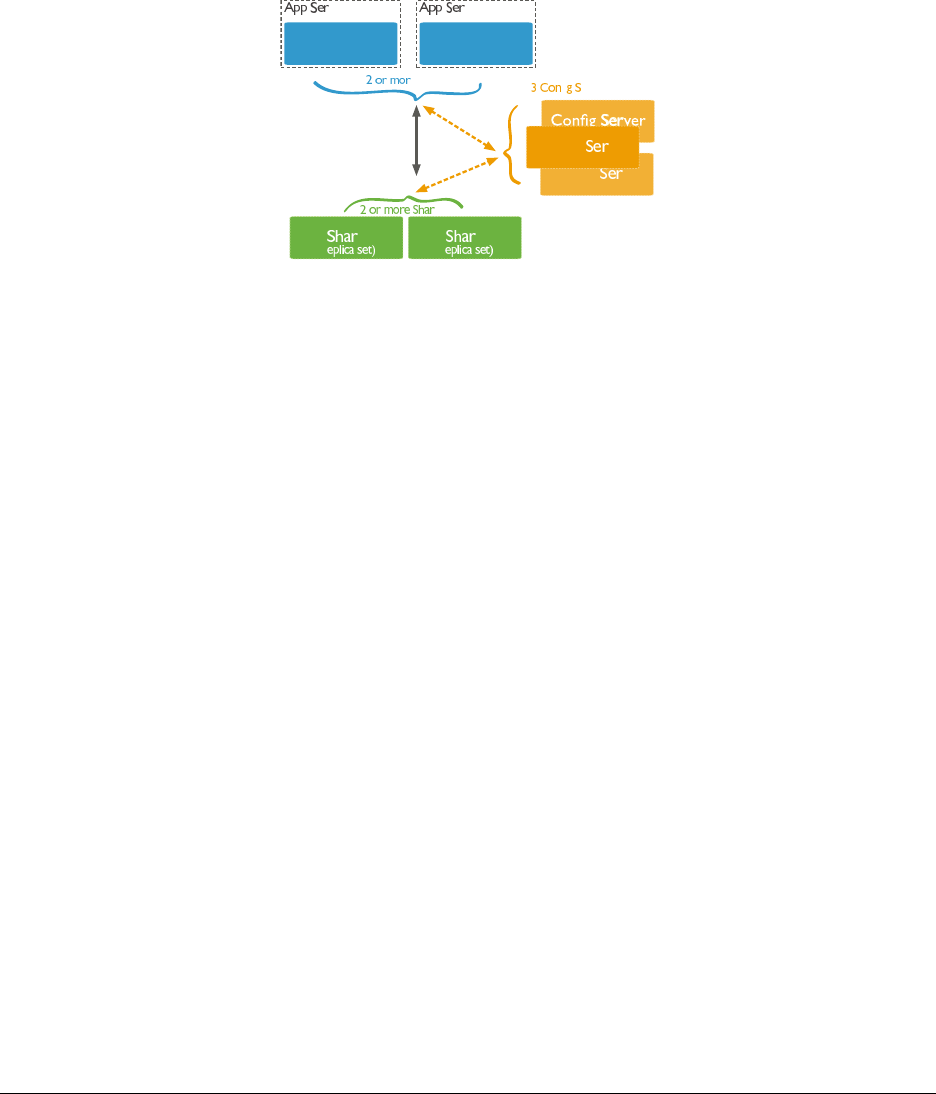

6.1 Introduction to Sharding ........................................118

6.2 Balancing Shards ............................................125

6.3 Shard Zones ...............................................127

6.4 Lab: Setting Up a Sharded Cluster ..................................129

7ReportingToolsandDiagnostics 136

7.1 Performance Troubleshooting .....................................136

8BackupandRecovery 144

8.1 Backup and Recovery .........................................144

9Aggregation 149

9.1 Intro to Aggregation ...........................................149

10 Views 157

10.1 Views Tutorial ..............................................157

10.2 Lab: Vertical Views ...........................................159

10.3 Lab: Horizontal Views .........................................160

10.4 Lab: Reshaped Views .........................................161

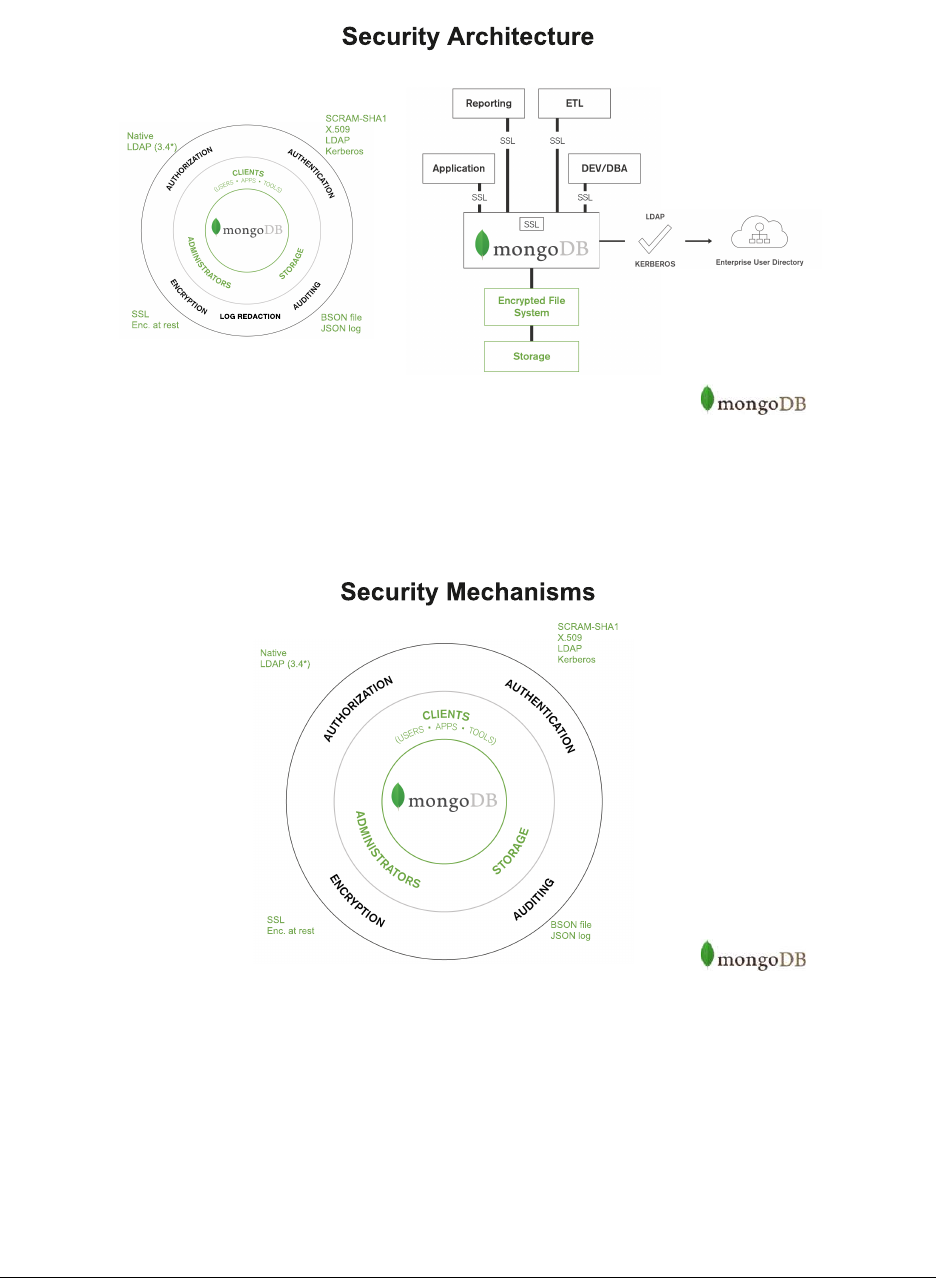

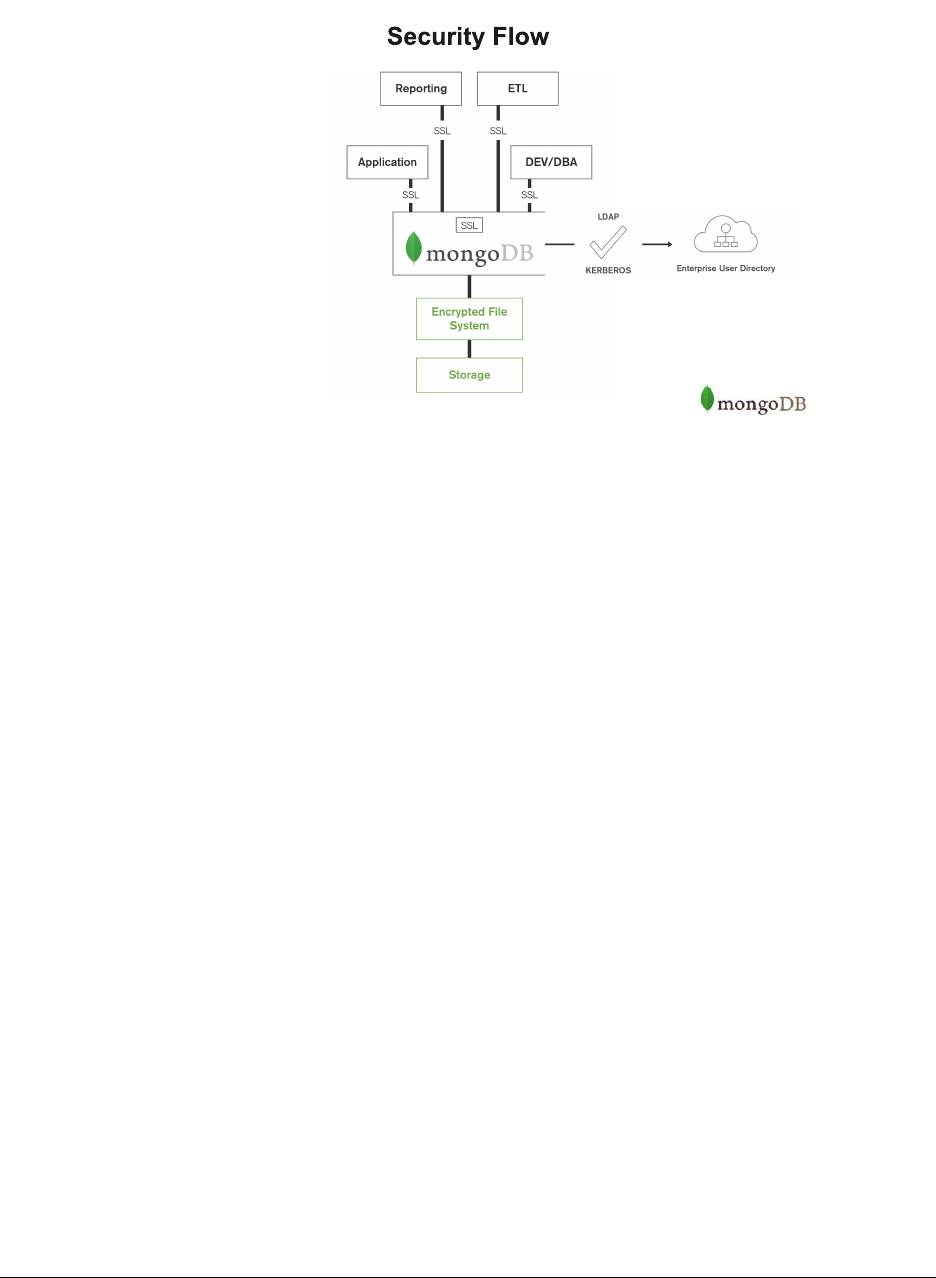

11 Security 162

11.1 Security Introduction ..........................................162

11.2 Authorization ...............................................165

11.3 Lab: Administration Users .......................................171

11.4 Lab: Create User-Defined Role (Optional) ..............................172

11.5 Authentication ..............................................174

11.6 Lab: Secure mongod ..........................................175

11.7 Auditing ..................................................176

11.8 Encryption ................................................178

11.9 Log Redaction ..............................................180

11.10Lab: Secured Replica Set - KeyFile (Optional) ...........................181

11.11Lab: LDAP Authentication & Authorization (Optional) ........................184

11.12Lab: Security Workshop ........................................186

12 MongoDB Atlas, Cloud & Ops Manager Fundamentals 195

12.1 MongoDB Cloud & Ops Manager ...................................195

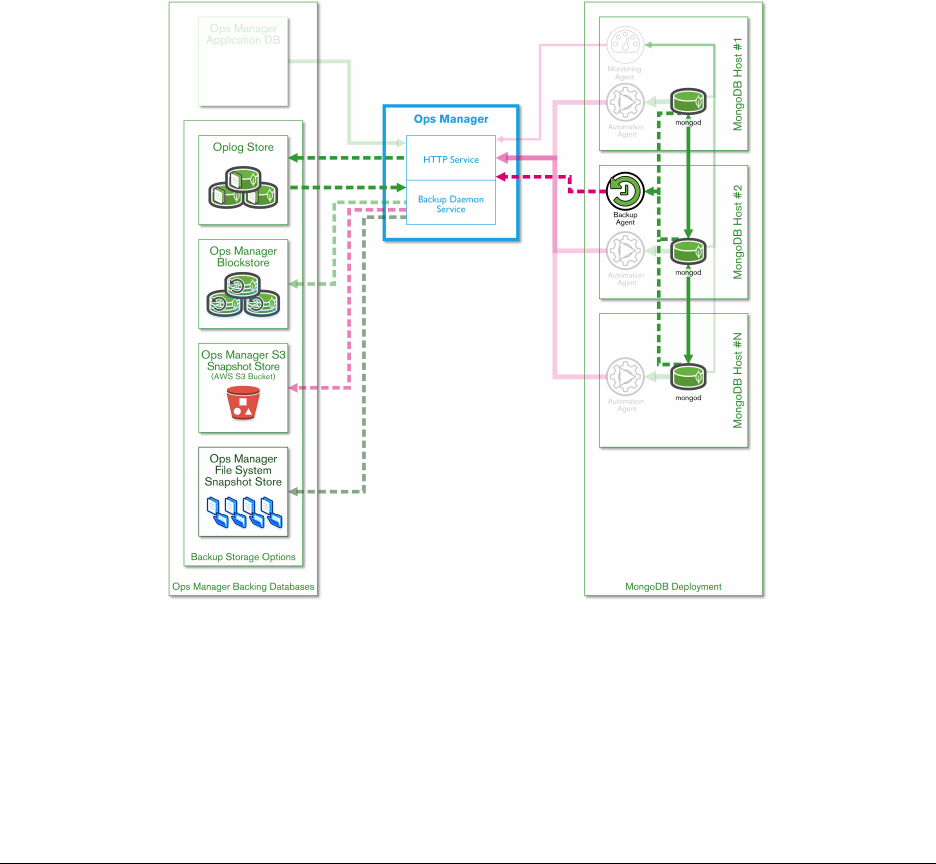

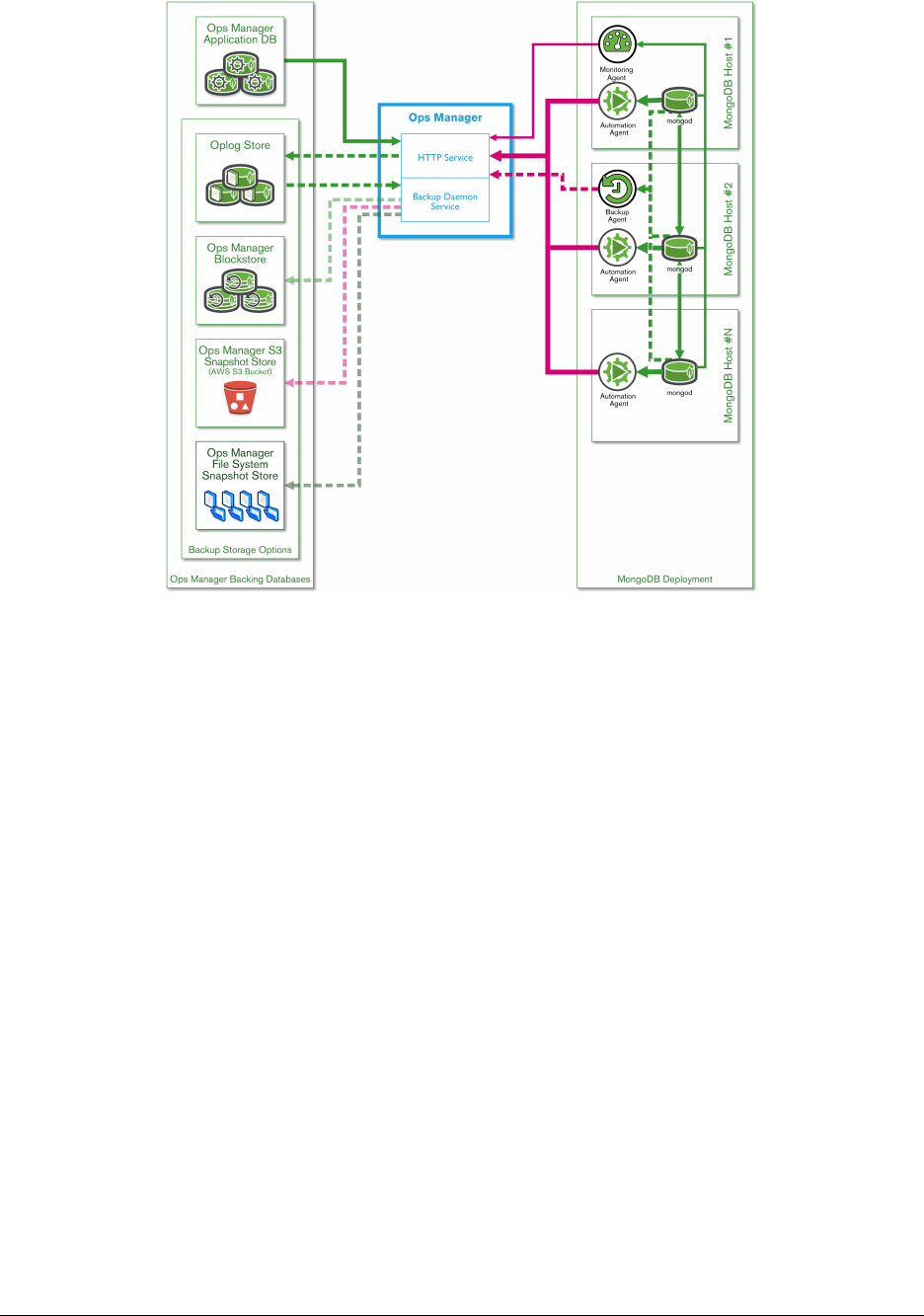

12.2 Automation ................................................197

12.3 Lab: Cluster Automation ........................................200

12.4 Monitoring ................................................201

12.5 Lab: Create an Alert ..........................................203

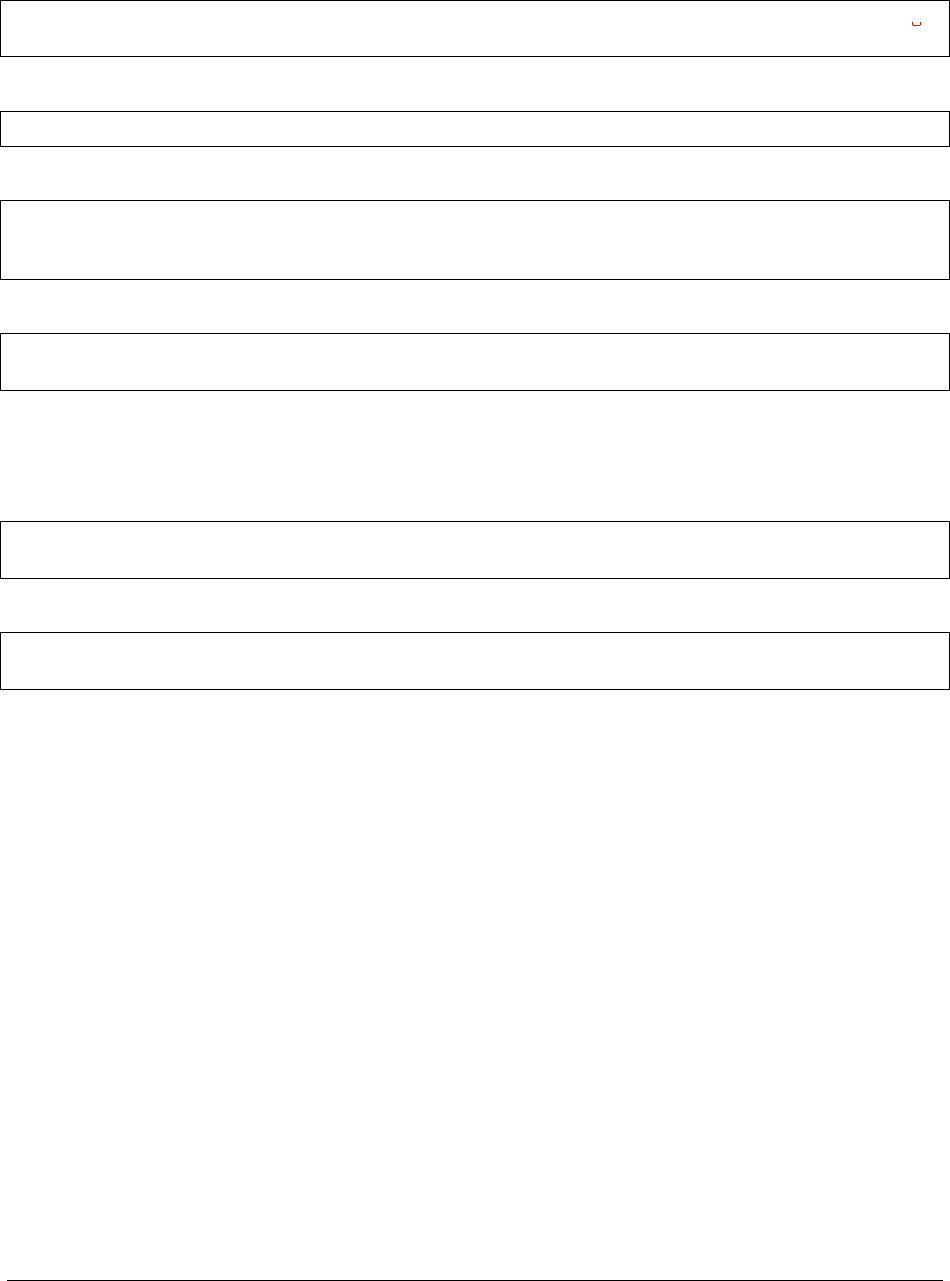

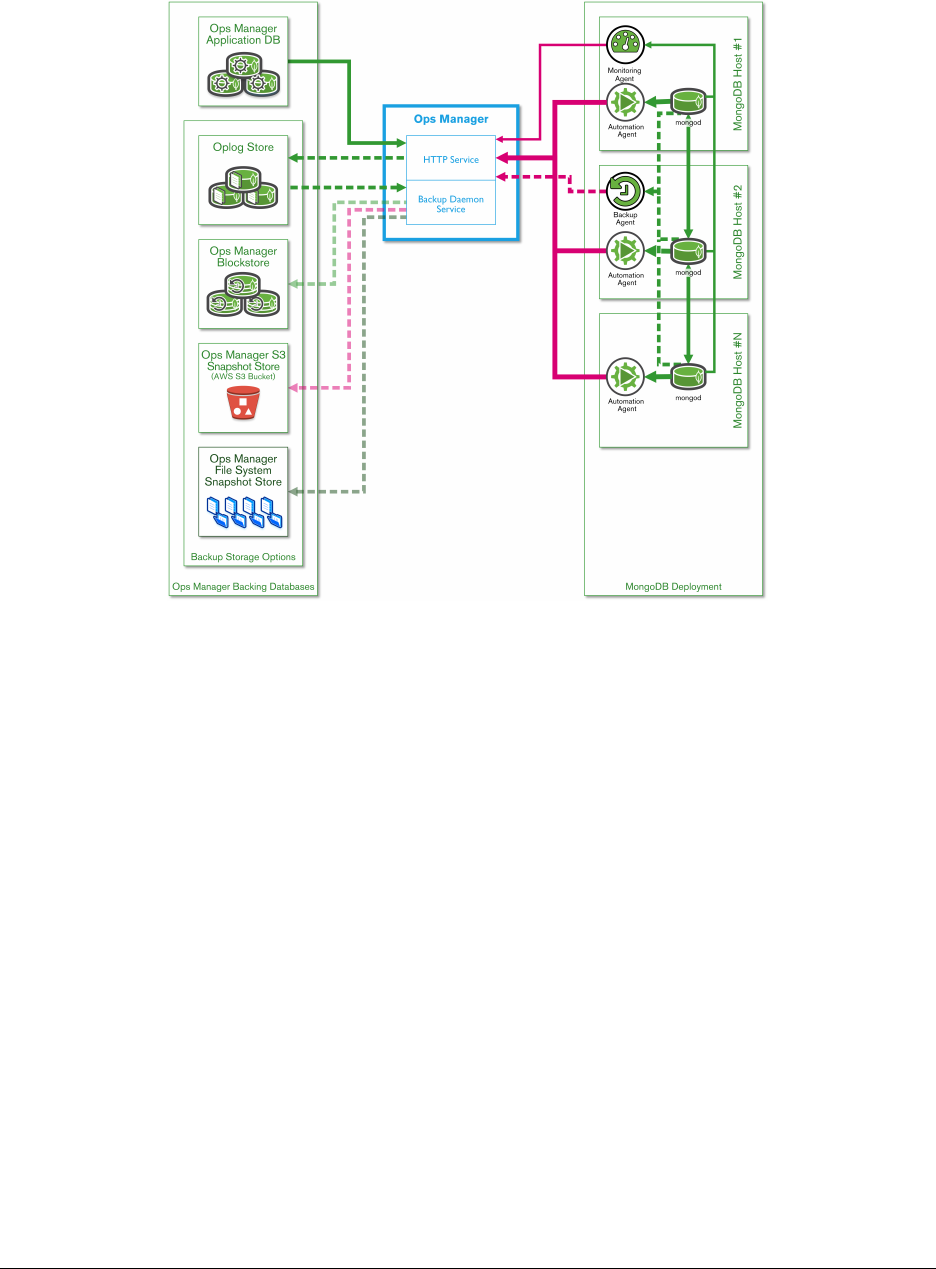

12.6 Backups .................................................203

13 MongoDB Cloud & Ops Manager Under the Hood 206

13.1 API ....................................................206

13.2 Lab: Cloud Manager API ........................................207

13.3 Architecture (Ops Manager) ......................................208

13.4 Security (Ops Manager) ........................................210

13.5 Lab: Install Ops Manager .......................................211

2

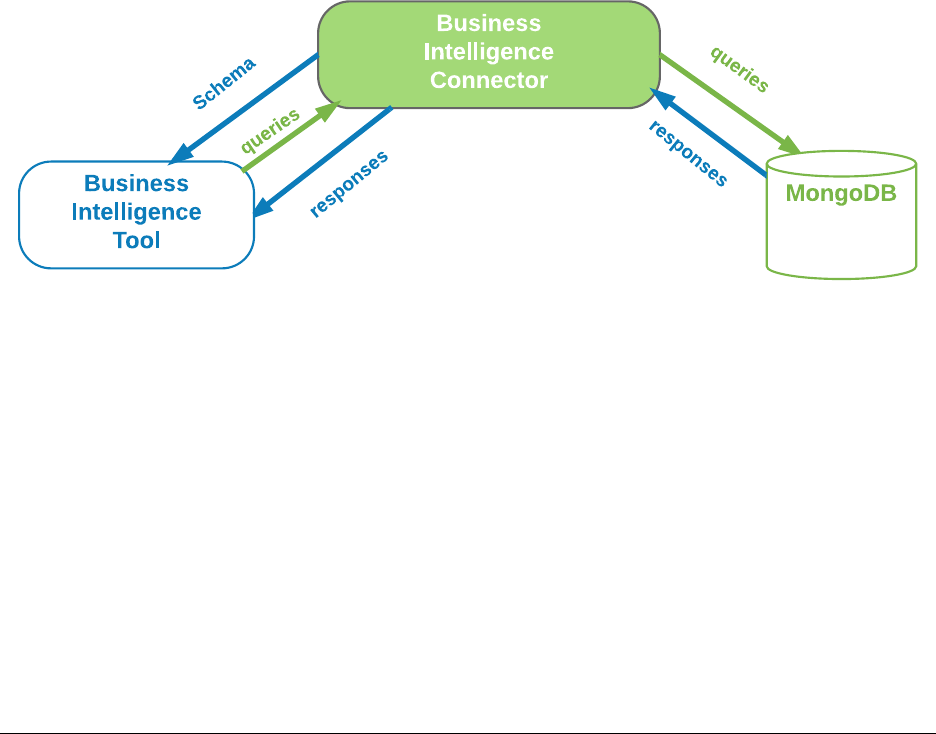

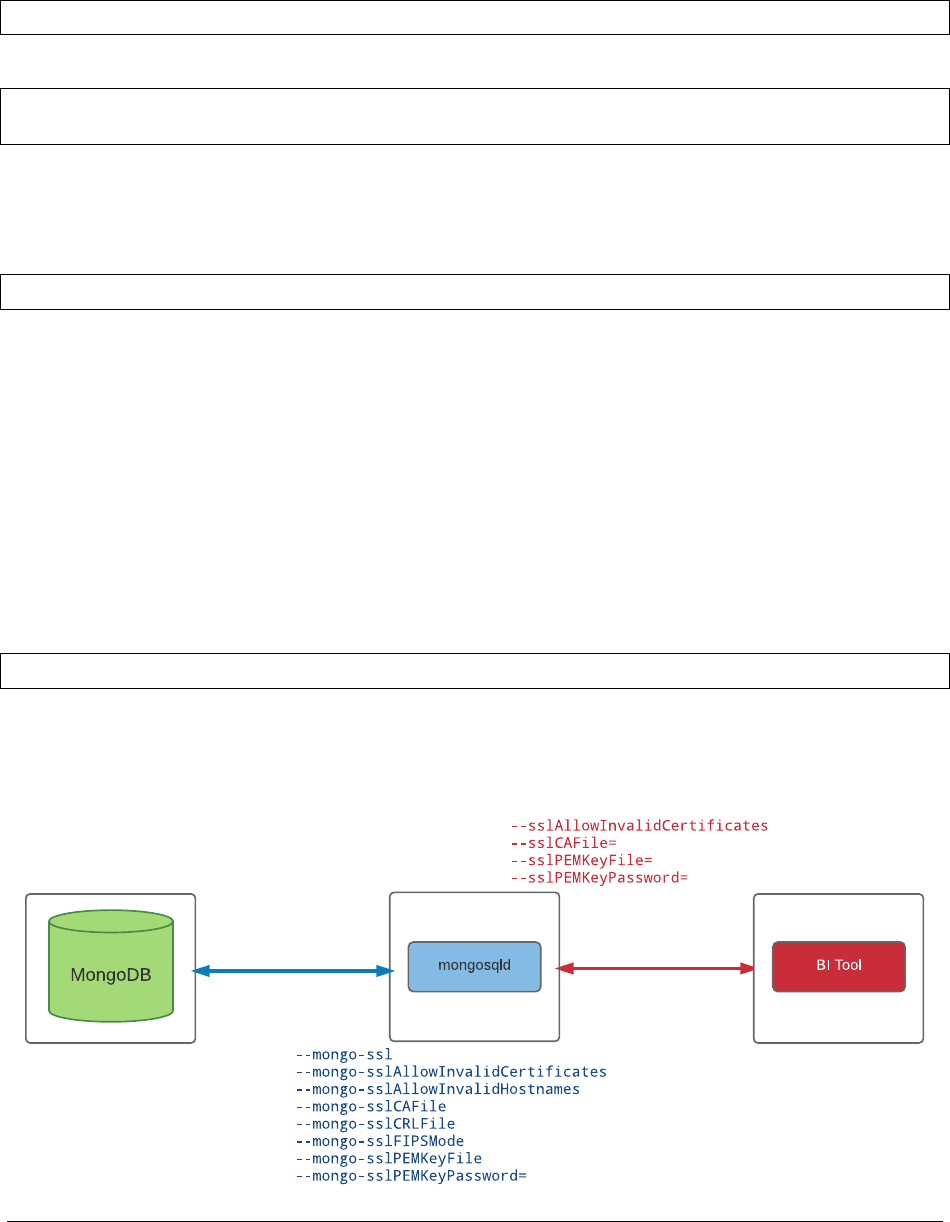

14 Introduction to MongoDB BI Connector 214

14.1 MongoDB Connector for BI ......................................214

3

1 Introduction

Warm Up (page 4) Activities to get the class started

MongoDB - The Company (page 5) About MongoDB, the company

MongoDB Overview (page 5) MongoDB philosophy and features

MongoDB Stores Documents (page 8) The structure of data in MongoDB

MongoDB Data Types (page 11) An overview of BSON data types in MongoDB

Lab: Installing and Configuring MongoDB (page 14) Install MongoDB and experiment with a few operations.

1.1 Warm Up

Introductions

• Who am I?

•MyroleatMongoDB

•Mybackgroundandpriorexperience

Getting to Know You

• Who are you?

• What role do you play in your organization?

• What is your background?

•DoyouhavepriorexperiencewithMongoDB?

MongoDB Experience

• Who has never used MongoDB?

• Who has some experience?

• Who has worked with production MongoDB deployments?

• Who is more of a developer?

• Who is more of an operations person?

4

Logistics

1.2 MongoDB - The Company

10gen

•MongoDBwasinitiallycreatedin2008aspartofahostedapplication stack.

•Thecompanywasoriginallycalled10gen.

•Aspartoftheiroverarchingplantocreatethe10genplatform, the company built a database.

•Suddenlyeverybodysaid:“Ilikethat!Givemethatdatabase!”

Origin of MongoDB

•10genbecameadatabasecompany.

•In2013,thecompanyrebrandedasMongoDB,Inc.

•Thefoundershaveotherstartupstotheircredit:DoubleClick, ShopWiki, Gilt.

•Themotivationforthedatabasecamefromobservingthefollowing pattern with application development.

–The user base grows.

–The associated body of data grows.

–Eventually the application outgrows the database.

–Meeting performance requirements becomes difficult.

1.3 MongoDB Overview

Learning Objectives

Upon completing this module students should understand:

•MongoDBvs.relationaldatabasesandkey/valuestores

•Verticalvs.horizontalscaling

•TheroleofMongoDBinthedevelopmentstack

•ThestructureofdocumentsinMongoDB

•Arrayfields

•Embeddeddocuments

•FundamentalsofBSON

5

MongoDB is a Document Database

Documents are associative arrays like:

•Pythondictionaries

•Rubyhashes

•PHParrays

•JSONobjects

An Example MongoDB Document

AMongoDBdocumentexpressedusingJSONsyntax.

{

"_id" :"/apple-reports-second-quarter-revenue",

"headline" :"Apple Reported Second Quarter Revenue Today",

"date" :ISODate("2015-03-24T22:35:21.908Z"),

"author" :{

"name" :"Bob Walker",

"title" :"Lead Business Editor"

},

"copy" :"Apple beat Wall St expectations by reporting ...",

"tags" :[

"AAPL","Earnings","Cupertino"

],

"comments" :[

{"name" :"Frank","comment" :"Great Story" },

{"name" :"Wendy","comment" :"When can I buy an Apple Watch?" }

]

}

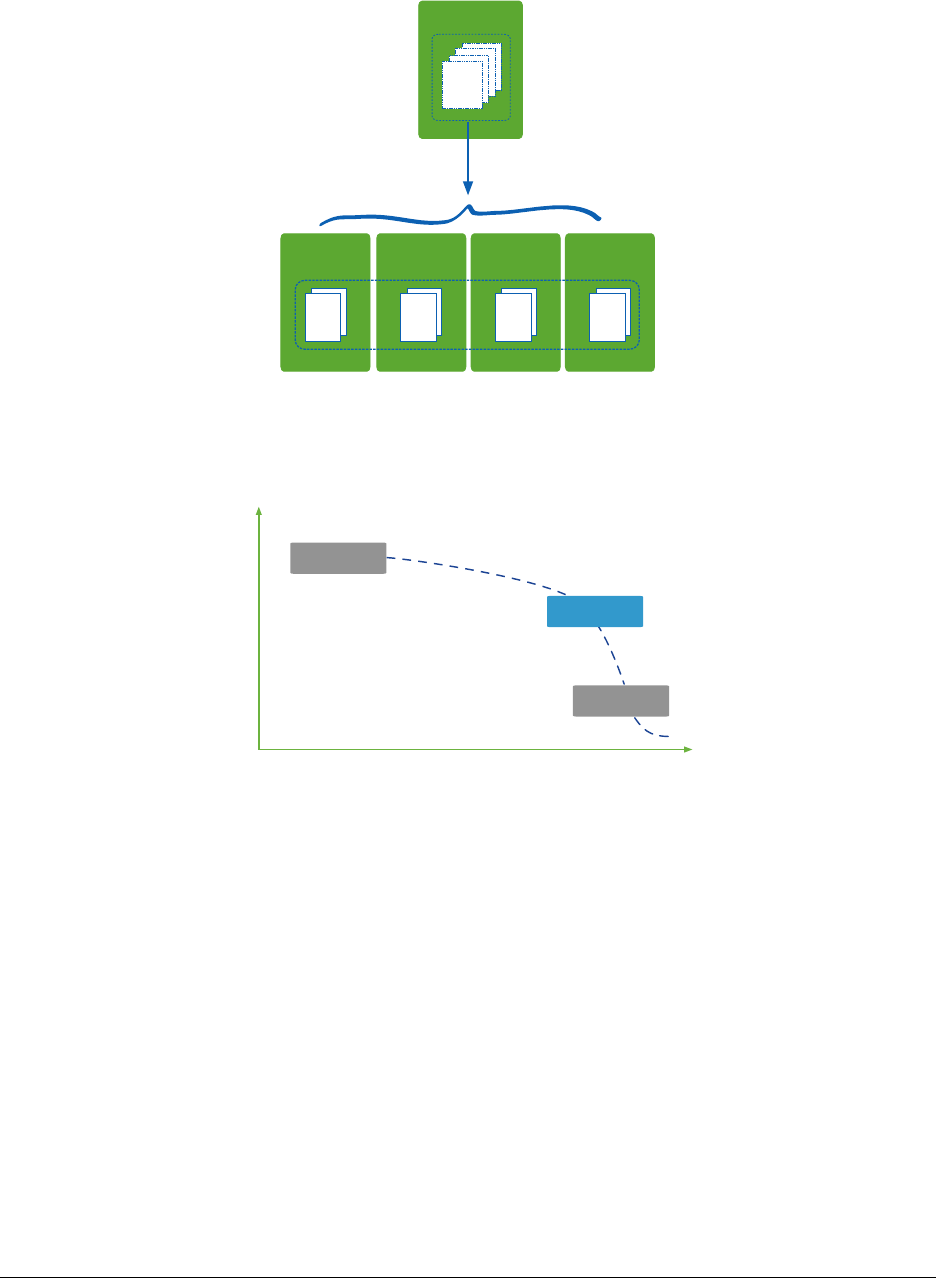

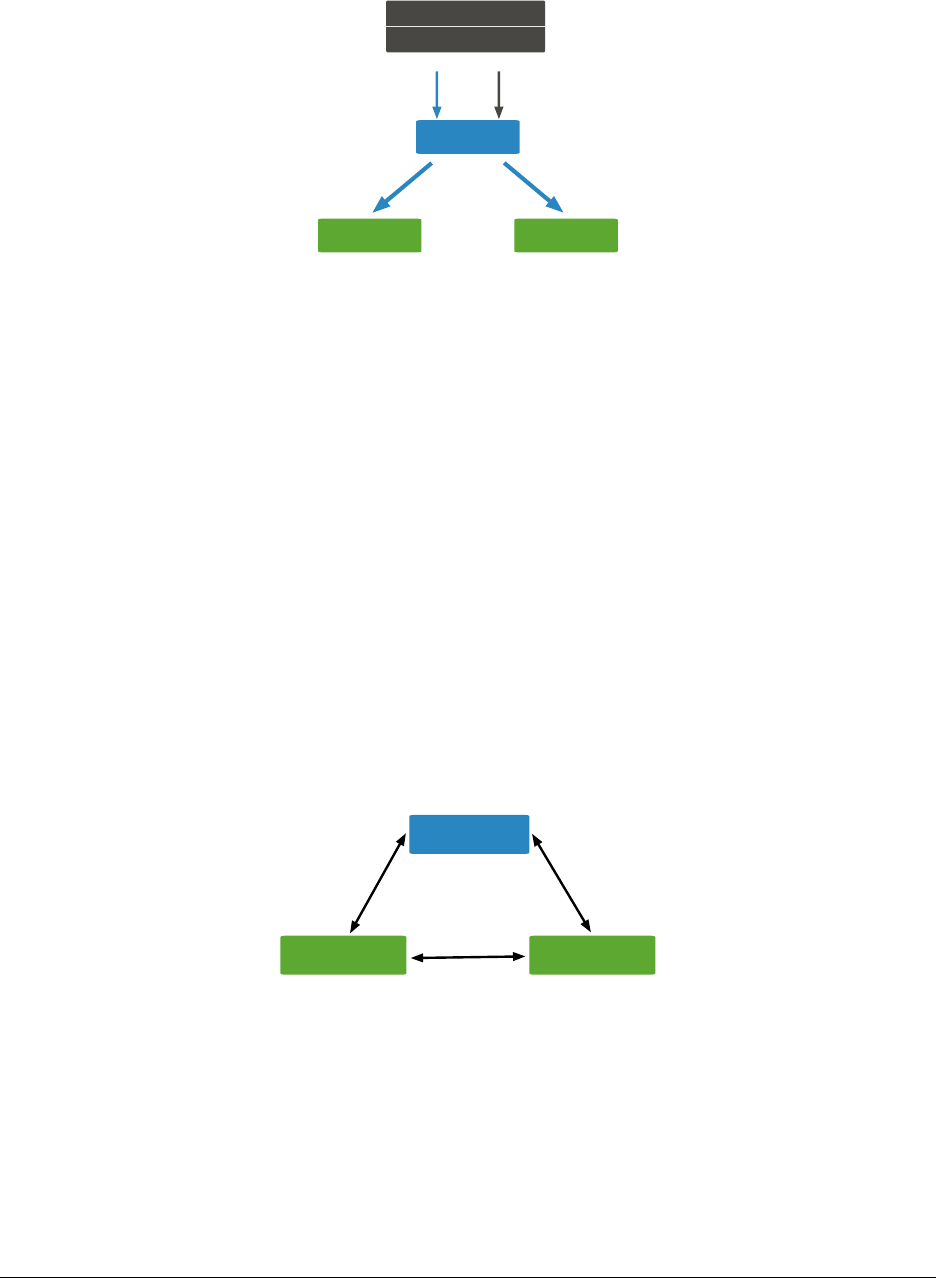

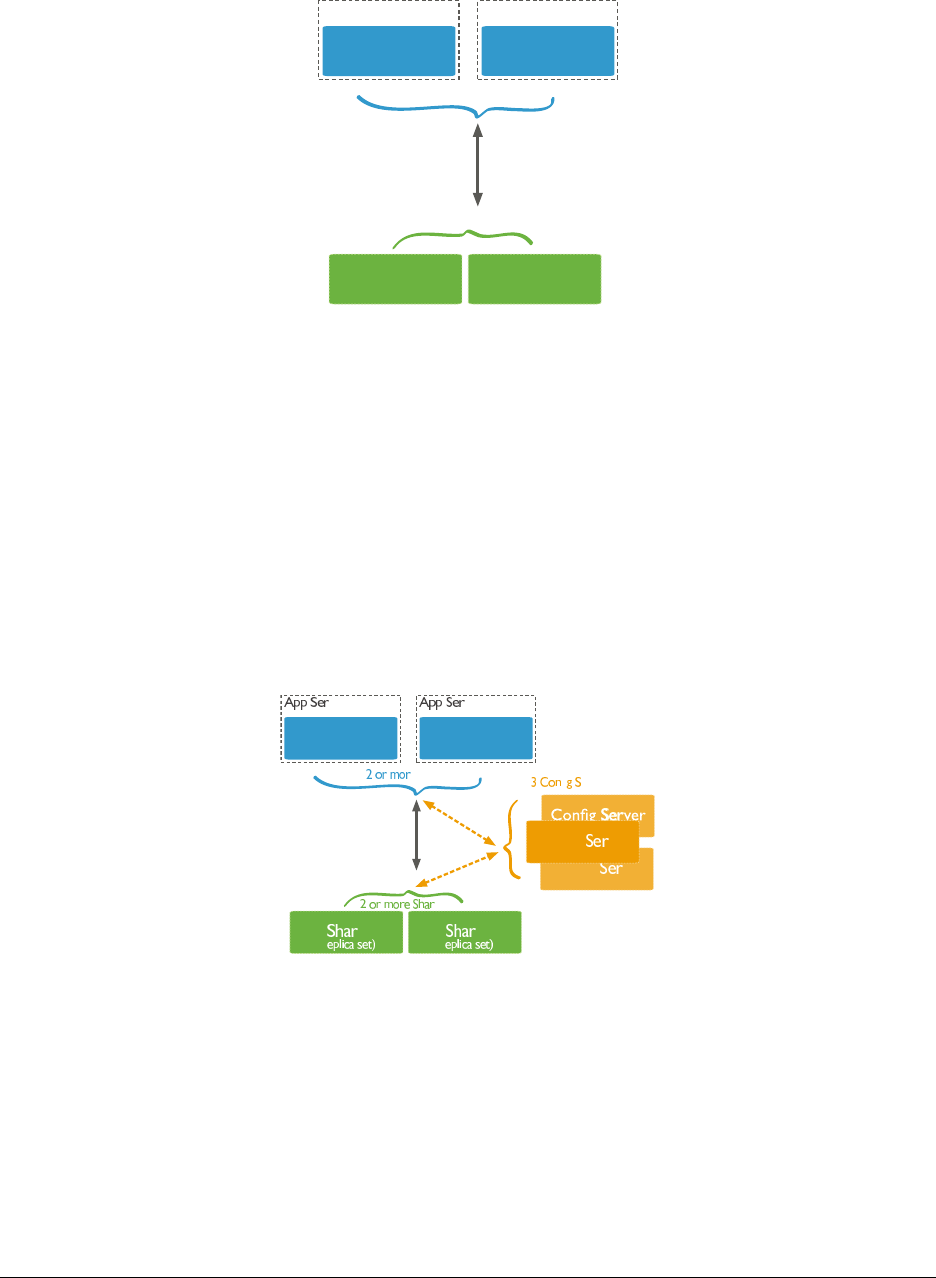

Vertical Scaling

CPU

RAM

I/O

CPU

RAM

I/O

6

Scaling with MongoDB

1TB

Collection1

Shard A

256 GB

Shard B

256 GB

Shard C

256 GB

Shard D

256 GB

Collection1

Database Landscape

Depth of Functionality

Scalability & Performance

RDBMS

Memcached

MongoDB

7

MongoDB Deployment Models

1.4 MongoDB Stores Documents

Learning Objectives

Upon completing this module, students should understand:

•JSON

•BSONbasics

•Thatdocumentsareorganizedintocollections

8

JSON

•JavaScriptObjectNotation

•Objectsareassociativearrays.

•Theyarecomposedofkey-valuepairs.

ASimpleJSONObject

{

"firstname" :"Thomas",

"lastname" :"Smith",

"age" :29

}

JSON Keys and Values

•Keysmustbestrings.

•Valuesmaybeanyofthefollowing:

–string (e.g., “Thomas”)

–number (e.g., 29, 3.7)

–true / false

–null

–array (e.g., [88.5, 91.3, 67.1])

–object

•Moredetailat

json.org1.

Example Field Values

{

"headline" :"Apple Reported Second Quarter Revenue Today",

"date" :ISODate("2015-03-24T22:35:21.908Z"),

"views" :1234,

"author" :{

"name" :"Bob Walker",

"title" :"Lead Business Editor"

},

"tags" :[

"AAPL",

23,

{"name" :"city","value" :"Cupertino" },

{"name" :"stockPrice","value":NumberDecimal("143.51")},

["Electronics","Computers" ]

]

}

1http://json.org/

9

BSON

•MongoDBstoresdataasBinaryJSON(BSON).

•MongoDBdriverssendandreceivedatainthisformat.

•TheymapBSONtonativedatastructures.

•BSONprovidessupportforallJSONdatatypesandseveralothers.

•BSONwasdesignedtobelightweight,traversableandefficient.

•Seebsonspec.org2.

BSON Hello World

// JSON

{"hello" :"world" }

// BSON

x16 x0 x0 x0 // document size

x2 // type 2=string

hellox0 // name of the field, null terminated

x6 x0 x0 x0 // size of the string value

worldx0 // string value, null terminated

x0 // end of document

AMoreComplexBSONExample

// JSON

{"BSON" :["awesome",5.05,1986 ]}

// BSON

x31 x0 x0 x0 // document size

x4 // type=4, array

BSONx0 // name of first element

x26 x0 x0 x0 // size of the array, in bytes

x2 // type=2, string

x30 x0 // element name ’0’

x8 x0 x0 x0 // size of value for array element 0

awesomex0 // string value for element 0

x1 // type=1, double

x31 x0 // element name ’1’

x33 x33 x33 x33 x33 x33 x14 x40 // double value for array element 1

x10 // type=16, int32

x32 x0 // element name ’2’

xc2 x7 x0 x0 // int32 value for array element 2

x0

x0

2http://bsonspec.org/#/specification

10

Documents, Collections, and Databases

•Documentsarestoredincollections.

•Collectionsarecontainedinadatabase.

•Example:

–Database: products

–Collections: books, movies, music

•Eachdatabase-collectioncombinationdefinesanamespace.

–products.books

–products.movies

–products.music

The _id Field

•Alldocumentsmusthavean_id field.

•Ifno_id is specified when a document is inserted, MongoDB will add the _id field as an ObjectId.

•MostdriverswillactuallycreatetheObjectIdifno_id is specified.

•Somerestrictions:

–The _id is immutable.

–Can not be an array

–The _id field must be unique to a collection

*acts as Primary key for replication.

1.5 MongoDB Data Types

Learning Objectives

By the end of this module, students should understand:

• What data types MongoDB supports

•SpecialconsiderationforsomeBSONtypes

11

What is BSON?

BSON is a binary serialization of JSON, used to store documents and make remote procedure calls in MongoDB. For

more in-depth coverage of BSON, specifically refer to bsonspec.org3

Note: All official MongoDB drivers map BSON to native types and data structures

BSON types

MongoDB supports a wide range of BSON types. Each data type hasacorrespondingnumberandstringaliasthatcan

be used with the $type operator to query documents by BSON type.

Double 1“double”

String 2“string”

Object 3“object”

Array 4“array”

Binary data 5“binData”

ObjectId 7“objectId”

Boolean 8“bool”

Date 9“date”

Null 10 “null”

BSON types continued

Regular Expression 11 “regex”

JavaScript 13 “javascript”

JavaScript (w/ scope) 15 “javascriptWithScope”

32-bit integer 16 “int”

Timestamp 17 “timestamp”

64-bit integer 18 “long”

Decimal128 19 “decimal”

Min key -1 “minKey”

Max key 127 “maxKey”

3http://bsonspec.org/

12

ObjectId

Date MAC address PID Counter

12 byte Hex String

ObjectId:

>ObjectId()

ObjectId("58dc309ce3f39998099d6275")

Timestamps

BSON has a special timestamp type for internal MongoDB use and is not associated with the regular Date type.

Date

BSON Date is a 64-bit integer that represents the number of milliseconds since the Unix epoch (Jan 1, 1970). This

results in a representable date range of about 290 million years into the past and future.

•OfficialBSONspecreferstotheBSONDatetypeasUTCdatetime

•Signeddatatype.Negativevaluesrepresentdatesbefore1970.

var today =ISODate() // using the ISODate constructor

Decimal

In MongoDB 3.4, support was added for 128-bit decimals.

•Thedecimal BSON type uses the decimal128 decimal-based floating-point numbering format.

•Thissupports34significantdigitsandanexponentrangeof-6143 to +6144.

•Intendedforapplicationsthathandlemonetaryandscientific data that requires exact precision.

How to use Decimal

For specific information about how your preferred driver supports decimal128, click here4.

In the Mongo shell, we use the NumberDecimal() constructor.

•Canbecreatedwithastringargumentoradouble

•StoredinthedatabaseasNumberDecimal(“999.4999”)

>NumberDecimal("999.4999")

NumberDecimal("999.4999")

>NumberDecimal(999.4999)

NumberDecimal("999.4999")

4https://docs.mongodb.com/ecosystem/drivers/

13

Decimal Considerations

•Ifupgradinganexistingdatabasetousedecimal128,itisrecommendedanewfieldbeaddedtoreflectthenew

type. The old field may be deleted after verifying consistency

•Ifanyfieldscontaindecimal128 data, they will not be compatible with previous versions of MongoDB. There

is no support for downgrading datafiles containing decimals

•decimal types are not strictly equal to their double representations, so use the NumberDecimal constructor in

queries.

1.6 Lab: Installing and Configuring MongoDB

Learning Objectives

Upon completing this exercise students should understand:

•HowMongoDBisdistributed

•HowtoinstallMongoDB

•ConfigurationstepsforsettingupasimpleMongoDBdeployment

•HowtorunMongoDB

•HowtoruntheMongoshell

Production Releases

64-bit production releases of MongoDB are available for the following platforms.

•Windows

•OSX

•Linux

Installing MongoDB

•Visithttps://docs.mongodb.com/manual/installation/.

•PleaseinstalltheEnterpriseversionofMongoDB.

•Clickontheappropriatelink,suchas“InstallonWindows”or “Install on OS X” and follow the instructions.

•Versions:

–Even-numbered builds are production releases, e.g., 2.4.x,2.6.x.

–Odd-numbers indicate development releases, e.g., 2.5.x, 2.7.x.

14

Linux Setup

PATH=$PATH:<path to mongodb>/bin

sudo mkdir -p /data/db

sudo chmod -R 744 /data/db

sudo chown -R `whoami`/data/db

Install on Windows

•Downloadandrunthe.msiWindowsinstallerfrommongodb.org/downloads.

•Bydefault,binarieswillbeplacedinthefollowingdirectory.

C:\Program Files\MongoDB\Server\<VERSION>\bin

•ItishelpfultoaddthelocationoftheMongoDBbinariestoyour path.

•Todothis,from“SystemProperties”select“Advanced”then“EnvironmentVariables”

Create a Data Directory on Windows

•EnsurethereisadirectoryforyourMongoDBdatafiles.

•Thedefaultlocationis\data\db.

•Createadatadirectorywithacommandsuchasthefollowing.

md \data\db

Launch a mongod

Explore the mongod command.

<path to mongodb>/bin/mongod --help

Launch a mongod with the MMAPv1 storage engine:

<path to mongodb>/bin/mongod --storageEngine mmapv1

Alternatively, launch with the WiredTiger storage engine (default).

<path to mongodb>/bin/mongod

Specify an alternate path for data files using the --dbpath option. (Make sure the directory already exists.) E.g.,

<path to mongodb>/bin/mongod --dbpath /test/mongodb/data/wt

15

The MMAPv1 Data Directory

ls /data/db

•Themongod.lockfile

–This prevents multiple mongods from using the same data directory simultaneously.

–Each MongoDB database directory has one .lock.

–The lock file contains the process id of the mongod that is usingthedirectory.

•Datafiles

–The names of the files correspond to available databases.

–Asingledatabasemayhavemultiplefiles.

The WiredTiger Data Directory

ls /data/db

•Themongod.lockfile

–Used in the same way as MMAPv1.

•Datafiles

–Each collection and index stored in its own file.

–Will fail to start if MMAPv1 files found

Import Exercise Data

unzip usb_drive.zip

cd usb_drive

mongoimport -d sample -c tweets twitter.json

mongoimport -d sample -c zips zips.json

mongoimport -d sample -c grades grades.json

cd dump

mongorestore -d sample city

mongorestore -d sample digg

Note: If there is an error importing data directly from a USB drive, please copy the sampledata.zip file to your local

computer first.

16

Launch a Mongo Shell

Open another command shell. Then type the following to start the Mongo shell.

mongo

Display available commands.

help

Explore Databases

Display available databases.

show dbs

To use a particular database we can type the following.

use <database_name>

db

Exploring Collections

show collections

db.<COLLECTION>.help()

db.<COLLECTION>.find()

Admin Commands

•Therearealsoanumberofadmincommandsatourdisposal.

•Thefollowingwillshutdownthemongodweareconnectedtothrough the Mongo shell.

•YoucanalsojustkillwithCtrl-Cintheshellwindowfromwhich you launched the mongod.

db.adminCommand({shutdown : 1})

•Confirmthatthemongodprocesshasindeedstopped.

•Onceyouhave,pleaserestartit.

17

2 Storage

Introduction to Storage Engines (page 18) MongoDB storage engines

2.1 Introduction to Storage Engines

Learning Objectives

Upon completing this module, students should be familiar with:

•AvailablestorageenginesinMongoDB

•MongoDBjournalingmechanics

•ThedefaultstorageengineforMongoDB

•Commonstorageengineparameters

•ThestorageengineAPI

What is a Database Storage Engine?

How Storage Engines Affect Performance

• Writing and reading documents

•Concurrency

•Compressionalgorithms

•Indexformatandimplementation

•On-diskformat

18

Storage Engine Journaling

•Keeptrackofallchangesmadetodatafiles

•Stagewritessequentiallybeforetheycanbecommittedtothe data files

•Crashrecovery,writesfromjournalcanbereplayedtodatafiles in the event of a failure

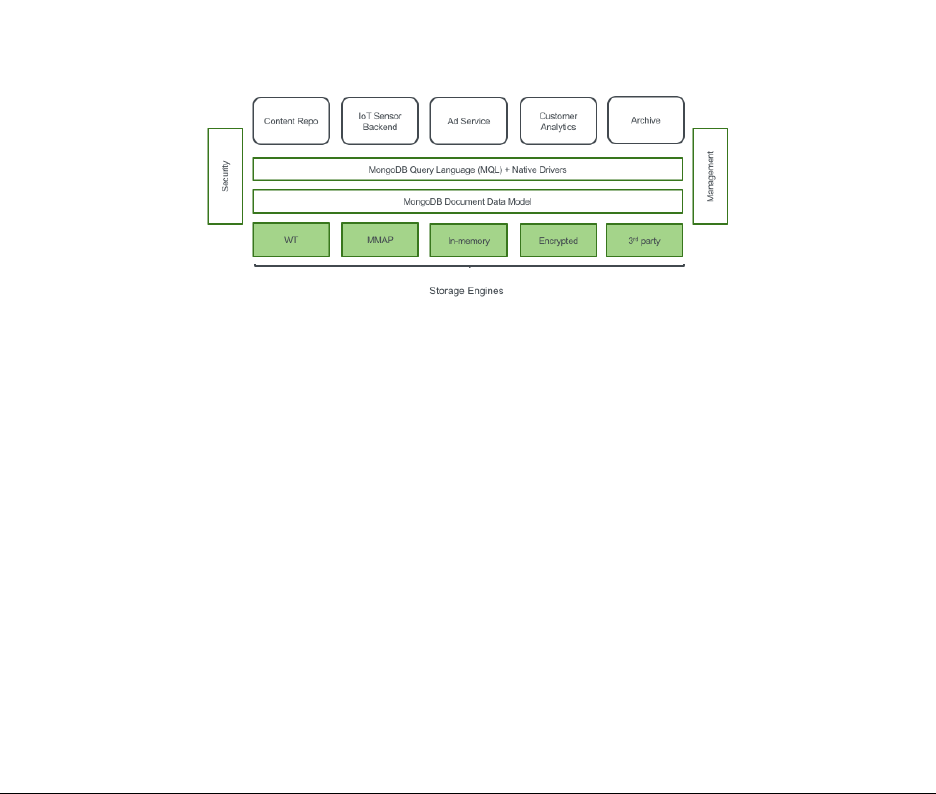

MongoDB Storage Engines

As of MongoDB 3.4, three storage engine options are available:

•WiredTiger(default)

–with the option of on-disk/at rest encryption (Enterprise only)

•MMAPv1

•In-memorystorage(Enterpriseonly)

Specifying a MongoDB Storage Engine

Use the --storageEngine parameter to specify which storage engine MongoDB should use. E.g.,

mongod --storageEngine mmapv1

Specifying a Location to Store Data Files

•Usethedbpath parameter

mongod --dbpath /data/db

•Otherfilesarealsostoredhere.E.g.,

–mongod.lock file

–journal

•SeetheMongoDBdocsforacompletelistof

storage options5.

5http://docs.mongodb.org/manual/reference/program/mongod/#storage-options

19

MMAPv1 Storage Engine

•MMAPv1isMongoDB’soriginalstorageenginewasthedefaultuptoMongoDB3.0.

•specifytheuseoftheMMAPv1storageengineasfollows:

mongod --storageEngine mmapv1

•MMAPv1isbasedonmemory-mappedfiles,whichmapdatafilesondiskintovirtualmemory.

•AsofMongoDB3.0,MMAPv1supportscollection-levelconcurrency.

MMAPv1 Workloads

MMAPv1 excels at workloads where documents do not outgrow their original record size:

•High-volumeinserts

•Read-onlyworkloads

•In-placeupdates

Power of 2 Sizes Allocation Strategy

•MongoDB3.0usesallocationasthedefaultrecordallocation strategy for MMAPv1.

•Withthisstrategy,recordsincludethedocumentplusextraspace,orpadding.

•Eachrecordhasasizeinbytesthatisapowerof2(e.g.32,64,128,...2MB).

•Fordocumentslargerthan2MB,allocationisroundeduptothe nearest multiple of 2MB.

•ThisstrategyenablesMongoDBtoefficientlyreusefreedrecords to reduce fragmentation.

•Inaddition,theaddedpaddinggivesadocumentroomtogrowwithout requiring a move.

–Saves the cost of moving a document

–Results in fewer updates to indexes

Compression in MongoDB

•Compressioncansignificantlyreducetheamountofdiskspace / memory required.

•ThetradeoffisthatcompressionrequiresmoreCPU.

•MMAPv1doesnotsupportcompression.

•WiredTigerdoes.

20

WiredTiger Storage Engine

•TheWiredTigerstorageengineexcelsatallworkloads,especially write-heavy and update-heavy workloads.

•NotablefeaturesoftheWiredTigerstorageenginethatdonot exist in the MMAPv1 storage engine include:

–Compression

–Document-level concurrency

•DefaultstorageenginesinceMongoDB3.2.

•Forolderversions,specifytheuseoftheWiredTigerstorage engine as follows.

mongod --storageEngine wiredTiger

WiredTiger Compression Options

•snappy (default): less CPU usage than zlib,lessreductionindatasize

•zlib:greaterCPUusagethansnappy,greaterreductionindatasize

•nocompression

Configuring Compression in WiredTiger

Use the wiredTigerCollectionBlockCompressor parameter. E.g.,

mongod --storageEngine wiredTiger

--wiredTigerCollectionBlockCompressor zlib

Configuring Memory Usage in WiredTiger

Use the wiredTigerCacheSize parameter to designate the amount of RAM for the cache used by the WT storage

engine.

•Bydefault,thisvalueissettothemaximumof:

–50% of physical RAM minus 1GB or 256 MB (for MongoDB 3.4+)

–60% of physical RAM minus 1GB or 1GB (for MongoDB 3.2)

•Additionally,MongoDBusesmemoryforconnections,aggregations, sorts, ...

•TherestofthememoryisusedbytheFileSystemCache,whichis also needed by WT for optimal performance.

21

Journaling in MMAPv1 vs. WiredTiger

•MMAPv1useswrite-aheadjournalingtoensureconsistencyand durability between fsyncs.

•WiredTigerusesawrite-aheadlogincombinationwithcheckpoints to ensure durability.

•Regardlessofstorageengine,alwaysusejournalinginproduction.

MMMAPv1 Journaling Mechanics

•Journalfilesin<DATA-DIR>/journalareappendonly

•1GBperjournalfile

•OnceMongoDBappliesallwriteoperationsfromajournalfiletothedatabasedatafiles,itdeletesthejournal

file (or re-uses it)

•Usuallyonlyafewjournalfilesinthe<DATA-DIR>/journaldirectory

MMAPv1 Journaling Mechanics (Continued)

•Dataisflushedfromthesharedviewtodatafilesevery60seconds (configurable)

•Theoperatingsystemmayforceaflushatahigherfrequencythan 60 seconds if the system is low on free

memory

•Onceajournalfilecontainsonlyflushedwrites,itisnolonger needed for recovery and can be deleted or re-used

WiredTiger Journaling Mechanics

•WiredTigerwillcommitacheckpointtodiskevery60secondsorwhenthereare2gigabytesofdatatowrite.

•Betweenandduringcheckpointsthedatafilesarealwaysvalid.

•TheWiredTigerjournalpersistsalldatamodificationsbetween checkpoints.

•IfMongoDBexitsbetweencheckpoints,itusesthejournaltoreplayalldatamodifiedsincethelastcheckpoint.

•Bydefault,WiredTigerjournaliscompressedusingsnappy.

Storage Engine API

MongoDB 3.0 introduced a storage engine API:

•Abstractedstorageenginefunctionalityinthecodebase

•EasierforMongoDBtodevelopfuturestorageengines

•EasierforthirdpartiestodeveloptheirownMongoDBstorage engines

22

Conclusion

•MongoDB3.0introducespluggablestorageengines.

•Currentoptionsinclude:

–MMAPv1 (default)

–WiredTiger

•WiredTigerintroducesthefollowingtoMongoDB:

–Compression

–Document-level concurrency

23

3CRUD

Creating and Deleting Documents (page 24) Inserting documents into collections, deleting documents,anddrop-

ping collections

Reading Documents (page 29) The find() command, query documents, dot notation, and cursors

Query Operators (page 36) MongoDB query operators including: comparison, logical, element, and array operators

Lab: Finding Documents (page 40) Exercises for querying documents in MongoDB

Updating Documents (page 40) Using update methods and associated operators to mutate existing documents

Lab: Updating Documents (page 49) Exercises for updating documents in MongoDB

3.1 Creating and Deleting Documents

Learning Objectives

Upon completing this module students should understand:

•HowtoinsertdocumentsintoMongoDBcollections.

•_id fields:

•Howtodeletedocumentsfromacollection

•Howtoremoveacollectionfromadatabase

•HowtoremoveadatabasefromaMongoDBdeployment

Creating New Documents

•CreatedocumentsusinginsertOne() and insertMany().

•Forexample:

// Specify the collection name

db.<COLLECTION>.insertOne( { "name" :"Mongo" })

// For example

db.people.insertOne( { "name" :"Mongo" })

24

Example: Inserting a Document

Experiment with the following commands.

use sample

db.movies.insertOne( { "title" :"Jaws" })

db.movies.find()

Implicit _id Assignment

•Wedidnotspecifyan_id in the document we inserted.

•Ifyoudonotassignone,MongoDBwillcreateoneautomatically.

•ThevaluewillbeoftypeObjectId.

Example: Assigning _ids

Experiment with the following commands.

db.movies.insertOne( { "_id" :"Jaws","year" :1975 })

db.movies.find()

Inserts will fail if...

•Thereisalreadyadocumentinthecollectionwiththat_id.

•Youtrytoassignanarraytothe_id.

•Theargumentisnotawell-formeddocument.

Example: Inserts will fail if...

// fails because _id can’t have an array value

db.movies.insertOne( { "_id" :["Star Wars",

"The Empire Strikes Back",

"Return of the Jedi" ]})

// succeeds

db.movies.insertOne( { "_id" :"Star Wars" })

// fails because of duplicate id

db.movies.insertOne( { "_id" :"Star Wars" })

// malformed document

db.movies.insertOne( { "Star Wars" })

25

insertMany()

•Youmaybulkinsertusinganarrayofdocuments.

•UseinsertMany() instead of insertOne()

Ordered insertMany()

•FororderedinsertsMongoDBwillstopprocessinginsertsupon encountering an error.

•Meaningthatonlyinsertsoccurringbeforeanerrorwillcomplete.

•Thedefaultsettingfordb.<COLLECTION>.insertMany is an ordered insert.

•Seethenextexerciseforanexample.

Example: Ordered insertMany()

Experiment with the following operation.

db.movies.insertMany( [ { "_id" :"Batman","year" :1989 },

{"_id" :"Home Alone","year" :1990 },

{"_id" :"Ghostbusters","year" :1984 },

{"_id" :"Ghostbusters","year" :1984 }])

db.movies.find()

Unordered insertMany()

•Pass{ordered: false}to insertMany() to perform unordered inserts.

•Ifanygiveninsertfails,MongoDBwillstillattemptallofthe others.

•Theinsertsmaybeexecutedinadifferentorderthanyouspecified.

•Thenextexerciseisverysimilartothepreviousone.

•However,weareusing{ordered: false}.

•Oneinsertwillfail,butalltherestwillsucceed.

Example: Unordered insertMany()

Experiment with the following insert.

db.movies.insertMany( [ { "_id" :"Jaws","year" :1975 },

{"_id" :"Titanic","year" :1997 },

{"_id" :"The Lion King","year" :1994 }],

{ ordered :false })

db.movies.find()

26

The Shell is a JavaScript Interpreter

•SometimesitisconvenienttocreatetestdatausingalittleJavaScript.

•Themongoshellisafully-functionalJavaScriptinterpreter. You may:

–Define functions

–Use loops

–Assign variables

–Perform inserts

Exercise: Creating Data in the Shell

Experiment with the following commands.

for (i=1;i<=10000;i++){

db.stuff.insert( { "a" :i})

}

db.stuff.find()

Deleting Documents

You may delete documents f r o m a M o n g o D B d e p l oyment i n s everalways.

•UsedeleteOne() and deleteMany() to delete documents matching a specific set of conditions.

•Dropanentirecollection.

•Dropadatabase.

Using deleteOne()

•DeleteadocumentfromacollectionusingdeleteOne()

•Thiscommandhasonerequiredparameter,aquerydocument.

•Thefirstdocumentinthecollectionmatchingthequerydocument will be deleted.

27

Using deleteMany()

•DeletemultipledocumentsfromacollectionusingdeleteMany().

•Thiscommandhasonerequiredparameter,aquerydocument.

•Alldocumentsinthecollectionmatchingthequerydocumentwillbedeleted.

•Passanemptydocumenttodeletealldocuments.

Example: Deleting Documents

Experiment with removing documents. Do a find() after each deleteMany() command below.

for (i=1;i<=20;i++) { db.testcol.insertOne( { _id :i, a :i})}

db.testcol.deleteMany( { a :1}) // Delete the first document

// $lt is a query operator that enables us to select documents that

// are less than some value. More on operators soon.

db.testcol.deleteMany( { a :{$lt:5}}) // Remove three more

db.testcol.deleteOne( { a :{$lt:10 }}) // Remove one more

db.testcol.deleteMany() // Error: requires a query document.

db.testcol.deleteMany( { } ) // All documents removed

Dropping a Collection

•Youcandropanentirecollectionwithdb.<COLLECTION>.drop()

•Thecollectionandalldocumentswillbedeleted.

•Itwillalsoremoveanymetadataassociatedwiththatcollection.

•Indexesareonetypeofmetadataremoved.

•All collection and indexes files are removed and space allocated reclaimed.

–Wired Tiger only!

•Moreonmetadatalater.

28

Example: Dropping a Collection

db.colToBeDropped.insertOne( { a :1})

show collections // Shows the colToBeDropped collection

db.colToBeDropped.drop()

show collections // collection is gone

Dropping a Database

•Youcandropanentiredatabasewithdb.dropDatabase()

•Thisdropsthedatabaseonwhichthemethodiscalled.

•Italsodeletestheassociateddatafilesfromdisk,freeingdisk space.

•Bewarethatinthemongoshell,thisdoesnotchangedatabasecontext.

Example: Dropping a Database

use tempDB

db.testcol1.insertOne( { a :1})

db.testcol2.insertOne( { a :1})

show dbs // Here they are

show collections // Shows the two collections

db.dropDatabase()

show collections // No collections

show dbs // The db is gone

use sample // take us back to the sample db

3.2 Reading Documents

Learning Objectives

Upon completing this module students should understand:

•Thequery-by-exampleparadigmofMongoDB

•Howtoqueryonarrayelements

•Howtoqueryembeddeddocumentsusingdotnotation

•Howthemongoshellanddriversusecursors

•Projections

•Cursormethods:.count(),.sort(),.skip(),.limit()

29

The find() Method

•ThisisthefundamentalmethodbywhichwereaddatafromMongoDB.

•Wehavealreadyuseditinitsbasicform.

•find() returns a cursor that enables us to iterate through all documents matching a query.

•Wewilldiscusscursorslater.

Query by Example

•ToqueryMongoDB,specifyadocumentcontainingthekey/value pairs you want to match

•Youneedonlyspecifyvaluesforfieldsyoucareabout.

•Otherfieldswillnotbeusedtoexcludedocuments.

•Theresultsetwillincludealldocumentsinacollectionthat match.

Example: Querying by Example

Experiment with the following sequence of commands.

db.movies.drop()

db.movies.insertMany( [

{"title" :"Jaws","year" :1975,"imdb_rating" :8.1 },

{"title" :"Batman","year" :1989,"imdb_rating" :7.6 }

])

db.movies.find()

db.movies.find( { "year" :1975 })

// Multiple Batman movies from different years, find the correct one

db.movies.find( { "year" :1989,"title" :"Batman" })

Querying Arrays

•InMongoDByoumayqueryarrayfields.

•Specifyasinglevalueyouexpecttofindinthatarrayindesired documents.

•Alternatively,youmayspecifyanentirearrayinthequerydocument.

•Aswewillseelater,therearealsoseveraloperatorsthatenhance our ability to query array fields.

30

Example: Querying Arrays

db.movies.drop()

db.movies.insertMany(

[{ "title" :"Batman","category" :["action","adventure" ]},

{"title" :"Godzilla","category" :["action","adventure","sci-fi" ]},

{"title" :"Home Alone","category" :["family","comedy" ]}

])

// Match documents where "category" contains the value specified

db.movies.find( { "category" :"action" })

// Match documents where "category" equals the value specified

db.movies.find( { "category" :["action","sci-fi" ]}) // no documents

// only the second document

db.movies.find( { "category" :["action","adventure","sci-fi" ]})

Querying with Dot Notation

•Dotnotationisusedtoqueryonfieldsinembeddeddocuments.

•Thesyntaxis:

"field1.field2" :value

•Putquotesaroundthefieldnamewhenusingdotnotation.

Example: Querying with Dot Notation

db.movies.insertMany(

[{

"title" :"Avatar",

"box_office" :{"gross" :760,

"budget" :237,

"opening_weekend" :77

}

},

{

"title" :"E.T.",

"box_office" :{"gross" :349,

"budget" :10.5,

"opening_weekend" :14

}

}

])

db.movies.find( { "box_office" :{"gross" :760 }})// no values

// dot notation

db.movies.find( { "box_office.gross" :760 }) // expected value

31

Example: Arrays and Dot Notation

db.movies.insertMany( [

{"title" :"E.T.",

"filming_locations" :

[{"city" :"Culver City","state" :"CA","country" :"USA" },

{"city" :"Los Angeles","state" :"CA","country" :"USA" },

{"city" :"Cresecent City","state" :"CA","country" :"USA" }

]},

{"title":"Star Wars",

"filming_locations" :

[{"city" :"Ajim","state" :"Jerba","country" :"Tunisia" },

{"city" :"Yuma","state" :"AZ","country" :"USA" }

]}])

db.movies.find( { "filming_locations.country" :"USA" })// two documents

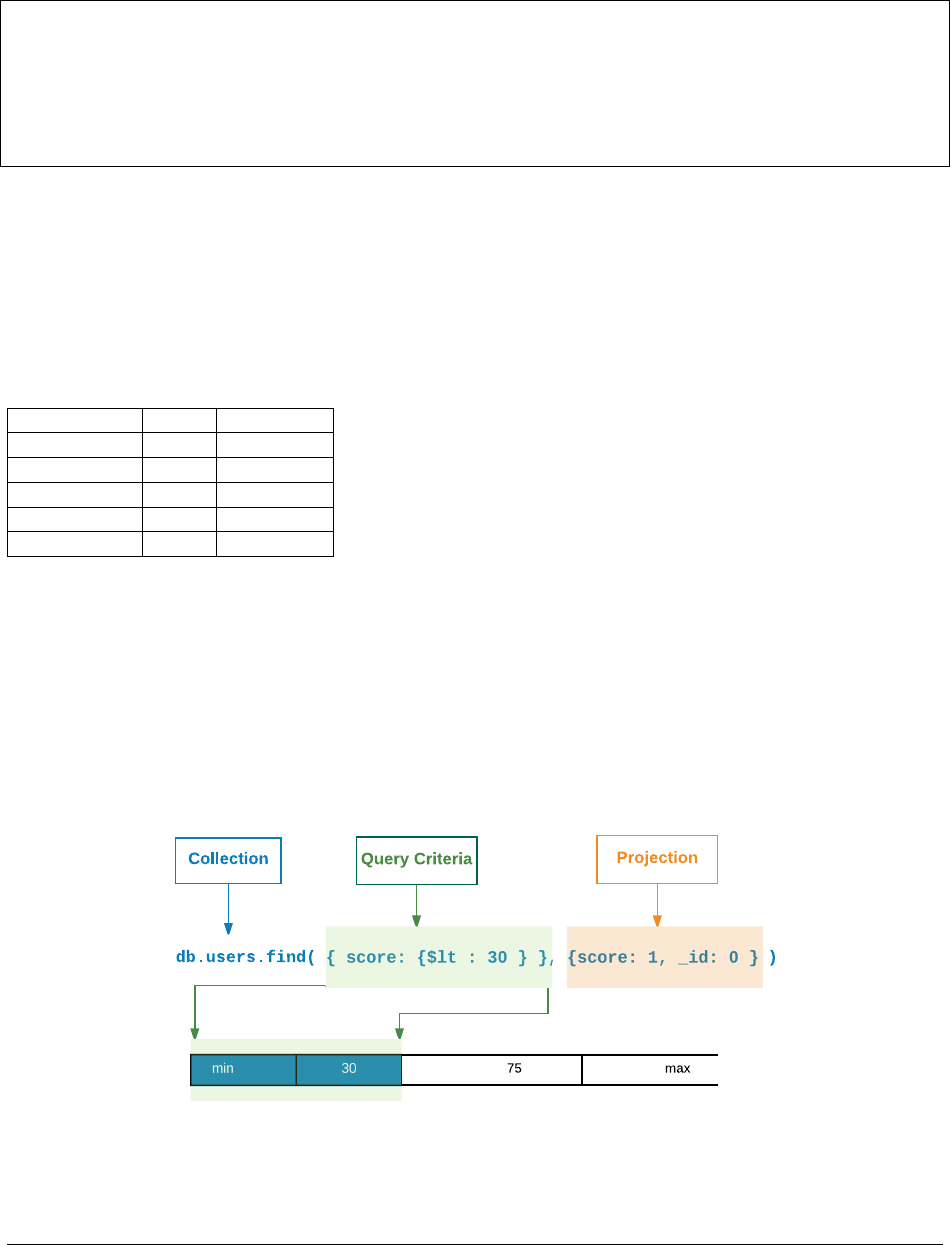

Projections

•Youmaychoosetohaveonlycertainfieldsappearinresultdocuments.

•Thisiscalledprojection.

•Youspecifyaprojectionbypassingasecondparametertofind().

Projection: Example (Setup)

db.movies.insertOne(

{

"title" :"Forrest Gump",

"category" :["drama","romance" ],

"imdb_rating" :8.8,

"filming_locations" :[

{"city" :"Savannah","state" :"GA","country" :"USA" },

{"city" :"Monument Valley","state" :"UT","country" :"USA" },

{"city" :"Los Anegeles","state" :"CA","country" :"USA" }

],

"box_office" :{

"gross" :557,

"opening_weekend" :24,

"budget" :55

}

})

32

Projection: Example

db.movies.findOne( { "title" :"Forrest Gump" },

{"title" :1,"imdb_rating" :1})

{

"_id" :ObjectId("5515942d31117f52a5122353"),

"title" :"Forrest Gump",

"imdb_rating" :8.8

}

Projection Documents

•IncludefieldswithfieldName: 1.

–Any field not named will be excluded

–except _id, which must be explicitly excluded.

•ExcludefieldswithfieldName: 0.

–Any field not named will be included.

Example: Projections

for (i=1;i<=20;i++){

db.movies.insertOne(

{"_id" :i, "title" :i,

"imdb_rating" :i, "box_office" :i})

}

db.movies.find()

// no "box_office"

db.movies.find( { "_id" :3}, { "title" :1,"imdb_rating" :1})

// no "imdb_rating"

db.movies.find( { "_id" :{ $gte :10 }},{"imdb_rating" :0})

// just "title"

db.movies.find( { "_id" :4}, { "_id" :0,"title" :1})

// just "imdb_rating", "box_office"

db.movies.find( { "_id" :5}, { _id :0,"title" :0})

// Can’t mix inclusion/exclusion except _id

db.movies.find( { "_id" :6}, { "title" :1,"imdb_rating" :0})

Cursors

• When you use find(),MongoDBreturnsacursor.

•Acursorisapointertotheresultset

•Youcangetiteratethroughdocumentsintheresultusingnext().

•Bydefault,themongoshellwilliteratethrough20documents at a time.

33

Example: Introducing Cursors

db.testcol.drop()

for (i=1;i<=10000;i++){

db.testcol.insertOne( { a :Math.floor( Math.random() *100 +1),

b:Math.floor( Math.random() *100 +1)})

}

db.testcol.find()

it

it

Example: Cursor Objects in the Mongo Shell

// Assigns the cursor returned by find() to a variable x

var x=db.testcol.find()

// Displays the first document in the result set.

x.next()

// True because there are more documents in the result set.

x.hasNext()

// Assigns the next document in the result set to the variable y.

y=x.next()

// Return value is the value of the a field of this document.

y.a

// Displaying a cursor prints the next 20 documents in the result set.

x

Cursor Methods

•count():Returnsthenumberofdocumentsintheresultset.

•limit():Limitstheresultsettothenumberofdocumentsspecified.

•skip():Skipsthenumberofdocumentsspecified.

Example: Using count()

db.testcol.drop()

for (i=1;i<=100;i++) { db.testcol.insertOne( { a :i})}

// all 100

db.testcol.count()

// just 41 docs

db.testcol.count( { a :{$lt:42 }})

// Another way of writing the same query

db.testcol.find( { a :{$lt:42 } } ).count( )

34

Example: Using sort()

db.testcol.drop()

for (i=1;i<=20;i++){

db.testcol.insertOne( { a :Math.floor( Math.random() *10 +1),

b:Math.floor( Math.random() *10 +1)})

}

db.testcol.find()

// sort descending; use 1 for ascending

db.testcol.find().sort( { a :-1})

// sort by b, then a

db.testcol.find().sort( { b :1,a:1})

// $natural order is just the order on disk.

db.testcol.find().sort( { $natural :1})

The skip() Method

•Skipsthespecifiednumberofdocumentsintheresultset.

•Thereturnedcursorwillbeginatthefirstdocumentbeyondthe number specified.

•Regardlessoftheorderinwhichyouspecifyskip() and sort() on a cursor, sort() happens first.

The limit() Method

•Limitsthenumberofdocumentsinaresultsettothefirstk.

•Specifykas the argument to limit()

•Regardlessoftheorderinwhichyouspecifylimit(),skip(),andsort() on a cursor, sort() happens

first.

•Helpsreduceresourcesconsumedbyqueries.

The distinct() Method

•Returnsallvaluesforafieldfoundinacollection.

•Onlyworksononefieldatatime.

•Inputisastring(notadocument)

35

Example: Using distinct()

db.movie_reviews.drop()

db.movie_reviews.insertMany( [

{"title" :"Jaws","rating" :5},

{"title" :"Home Alone","rating" :1},

{"title" :"Jaws","rating" :7},

{"title" :"Jaws","rating" :4},

{"title" :"Jaws","rating" :8}])

db.movie_reviews.distinct( "title" )

3.3 Query Operators

Learning Objectives

Upon completing this module students should understand the following types of MongoDB query operators:

•Comparisonoperators

•Logicaloperators

•Elementqueryoperators

•Operatorsonarrays

Comparison Query Operators

•$lt:Existsandislessthan

•$lte:Existsandislessthanorequalto

•$gt:Existsandisgreaterthan

•$gte:Existsandisgreaterthanorequalto

•$ne:Doesnotexistordoesbutisnotequalto

•$in:Existsandisinaset

•$nin:Doesnotexistorisnotinaset

Example (Setup)

// insert sample data

db.movies.insertMany( [

{

"title" :"Batman",

"category" :["action","adventure" ],

"imdb_rating" :7.6,

"budget" :35

},

{

"title" :"Godzilla",

"category" :["action",

"adventure","sci-fi" ],

"imdb_rating" :6.6

},

36

{

"title" :"Home Alone",

"category" :["family","comedy" ],

"imdb_rating" :7.4

}

])

Example: Comparison Operators

db.movies.find()

db.movies.find( { "imdb_rating" :{ $gte :7}})

db.movies.find( { "category" :{$ne:"family" }})

db.movies.find( { "title" :{$in:["Batman","Godzilla" ]}})

db.movies.find( { "title" :{ $nin :["Batman","Godzilla" ]}})

Logical Query Operators

•$or:Matcheitheroftwoormorevalues

•$not:Usedwithotheroperators

•$nor:Matchneitheroftwoormorevalues

•$and:Matchbothoftwoormorevalues

–This is the default behavior for queries specifying more thanonecondition.

–Use $and if you need to include the same operator more than once in a query.

Example: Logical Operators

db.movies.find( { $or :[

{"category" :"sci-fi" }, { "imdb_rating" :{ $gte :7}}

]})

// more complex $or, really good sci-fi movie or medicore family movie

db.movies.find( { $or :[

{"category" :"sci-fi","imdb_rating" :{ $gte :8}},

{"category" :"family","imdb_rating" :{ $gte :7}}

]})

// find bad movies

db.movies.find( { "imdb_rating" :{ $not :{$gt:7}}})

37

Example: Logical Operators

// find movies within an imdb_rating range

db.movies.find( { "imdb_rating" :{$gt:5, $lte :7}}) // and is implicit

// queries can be nested, why are there no results?

db.movies.find( { $and :[

{$or:[

{"category" :"sci-fi","imdb_rating" :{ $gte :8}},

{"category" :"family","imdb_rating" :{ $gte :7}}

]},

{$or:[

{"category" :"action","imdb_rating" :{ $gte :6}}

]}

]})

Element Query Operators

•$exists:Selectdocumentsbasedontheexistenceofaparticularfield.

•$type:Selectdocumentsbasedontheirtype.

•See

BSON types6for reference on types.

Example: Element Operators

db.movies.find( { "budget" :{ $exists :true }})

// type 1 is Double

db.movies.find( { "budget" :{ $type :1}})

// type 3 is Object (embedded document)

db.movies.find( { "budget" :{ $type :3}})

Array Query Operators

•$all:Arrayfieldmustcontainallvalueslisted.

•$size:Arraymusthaveaparticularsize.E.g.,$size : 2 means 2 elements in the array

•$elemMatch:Allconditionsmustbematchedbyatleastoneelementinthearray

6http://docs.mongodb.org/manual/reference/bson-types

38

Example: Array Operators

db.movies.find( { "category" :{ $all :["sci-fi","action" ]}})

db.movies.find( { "category" :{ $size :3}})

Example: $elemMatch

db.movies.insertOne( {

"title" :"Raiders of the Lost Ark",

"filming_locations" :[

{"city" :"Los Angeles","state" :"CA","country" :"USA" },

{"city" :"Rome","state" :"Lazio","country" :"Italy" },

{"city" :"Florence","state" :"SC","country" :"USA" }

]})

// This query is incorrect, it won’t return what we want

db.movies.find( {

"filming_locations.city" :"Florence",

"filming_locations.country" :"Italy"

})

// $elemMatch is needed, now there are no results, this is expected

db.movies.find( {

"filming_locations" :{

$elemMatch :{

"city" :"Florence",

"country" :"Italy"

}}})

39

3.4 Lab: Finding Documents

Exercise: student_id < 65

In the sample database, how many documents in the grades collection have a student_id less than 65?

Exercise: Inspection Result “Fail” & “Pass”

In the sample database, how many documents in the inspectionscollectionhaveresult “Pass” or “Fail”?

Exercise: View Count > 1000

In the stories collection, write a query to find all stories where the view count is greater than 1000.

Exercise: Most comments

Find the news article that has the most comments in the storiescollection

Exercise: Television or Videos

Find all digg stories where the topic name is “Television” or the media type is “videos”. Skip the first 5 results and

limit the result set to 10.

Exercise: News or Images

Query for all digg stories whose media type is either “news” or“images”andwherethetopicnameis“Comedy”.(For

extra practice, construct two queries using different sets of operators to do this.)

3.5 Updating Documents

Learning Objectives

Upon completing this module students should understand

•ThereplaceOne() method

•TheupdateOne() method

•TheupdateMany() method

•Therequiredparametersforthesemethods

•Fieldupdateoperators

•Arrayupdateoperators

•Theconceptofanupsertandusecases.

•ThefindOneAndReplace() and findOneAndUpdate() methods

40

The replaceOne() Method

•Takesonedocumentandreplacesitwithanother

–But leaves the _id unchanged

•Takestwoparameters:

–Amatchingdocument

–Areplacementdocument

•Thisis,insomesense,thesimplestformofupdate

First Parameter to replaceOne()

•RequiredparametersforreplaceOne()

–The query parameter:

*Use the same syntax as with find()

*Only the first document found is replaced

•replaceOne() cannot delete a document

Second Parameter to replaceOne()

•Thesecondparameteristhereplacementparameter:

–The document to replace the original document

•The_idmuststaythesame

•Youmustreplacetheentiredocument

–You cannot modify just one field

–Except for the _id

Example: replaceOne()

db.movies.insertOne( { title:"Batman" })

db.movies.find()

db.movies.replaceOne( { title :"Batman" }, { imdb_rating :7.7 })

db.movies.find()

db.movies.replaceOne( { imdb_rating:7.7 },

{ title

:"Batman", imdb_rating:7.7 })

db.movies.find()

db.movies.replaceOne( { }, { title:"Batman" })

db.movies.find() // back in original state

db.movies.replaceOne( { }, { _id :ObjectId() } )

41

The updateOne() Method

•MutateonedocumentinMongoDBusingupdateOne()

–Affects only the _first_ document found

•Twoparameters:

–Aquerydocument

*same syntax as with find()

–Change document

*Operators specify the fields and changes

$set and $unset

•UsetospecifyfieldstoupdateforUpdateOne()

•Ifthefieldalreadyexists,using$set will change its value

–If not, $set will create it, set to the new value

•Onlyspecifiedfieldswillchange

•Alternatively,removeafieldusing$unset

Example (Setup)

db.movies.insertMany( [

{

"title" :"Batman",

"category" :["action","adventure" ],

"imdb_rating" :7.6,

"budget" :35

},

{

"title" :"Godzilla",

"category" :["action",

"adventure","sci-fi" ],

"imdb_rating" :6.6

},

{

"title" :"Home Alone",

"category" :["family","comedy" ],

"imdb_rating" :7.4

}

])

42

Example: $set and $unset

db.movies.updateOne( { "title" :"Batman" },

{ $set :{"imdb_rating" :7.7 }})

db.movies.updateOne( { "title" :"Godzilla" },

{ $set :{"budget" :1}})

db.movies.updateOne( { "title" :"Home Alone" },

{ $set :{"budget" :15,

"imdb_rating" :5.5 }})

db.movies.updateOne( { "title" :"Home Alone" },

{ $unset :{"budget" :1}})

db.movies.find()

Update Operators

•$inc:Incrementafield’svaluebythespecifiedamount.

•$mul:Multiplyafield’svaluebythespecifiedamount.

•$rename:Renameafield.

•$set:Updateoneormorefields(alreadydiscussed).

•$unset Delete a field (already discussed).

•$min:Updatesthefieldvaluetoaspecifiedvalueifthespecifiedvalue is less than the current value of the

field

•$max:Updatesthefieldvaluetoaspecifiedvalueifthespecifiedvalue is greater than the current value of

the field

•$currentDate:Setthevalueofafieldtothecurrentdateortimestamp.

Example: Update Operators

db.movies.updateOne( { title:"Batman" }, { $inc:{"imdb_rating" :2}})

db.movies.updateOne( { title:"Home Alone" }, { $inc:{"budget" :5}})

db.movies.updateOne( { title:"Batman" }, { $mul:{"imdb_rating" :4}})

db.movies.updateOne( { title:"Batman" },

{ $rename

:{ budget:"estimated_budget" }})

db.movies.updateOne( { title:"Home Alone" }, { $min:{ budget:5}})

db.movies.updateOne( { title:"Home Alone" },

{ $currentDate :{ last_updated:{ $type:"timestamp" }}})

// increment movie rating by 1

db.movie_mentions.updateOne( { title:"Batman" },

{ $inc

:{"imdb_rating" :1}})

43

The updateMany() Method

•TakesthesameargumentsasupdateOne

•Updatesalldocumentsthatmatch

–updateOne stops after the first match

–updateMany continues until it has matched all

Warning: Without an appropriate index, you may scan every document in the collection.

Example: updateMany()

// let’s start tracking the number of sequals for each movie

db.movies.updateOne( { }, { $set :{"sequels" :0}})

db.movies.find()

// we need updateMany to change all documents

db.movies.updateMany( { }, { $set :{"sequels" :0}})

db.movies.find()

Array Element Updates by Index

•Youcanusedotnotationtospecifyanarrayindex

•Youwillupdateonlythatelement

–Other elements will not be affected

Example: Update Array Elements by Index

// add a sample document to track mentions per hour

db.movie_mentions.insertOne(

{"title" :"E.T.",

"day" :ISODate("2015-03-27T00:00:00.000Z"),

"mentions_per_hour" :[0,0,0,0,0,0,0,

0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,

0,0]

})

// update all mentions for the fifth hour of the day

db.movie_mentions.updateOne(

{"title" :"E.T." },

{"$set" :{"mentions_per_hour.5" :2300 }})

44

Array Operators

•$push:Appendsanelementtotheendofthearray.

•$pushAll:Appendsmultipleelementstotheendofthearray.

•$pop:Removesoneelementfromtheendofthearray.

•$pull:Removesallelementsinthearraythatmatchaspecifiedvalue.

•$pullAll:Removesallelementsinthearraythatmatchanyofthespecified values.

•$addToSet:Appendsanelementtothearrayifnotalreadypresent.

Example: Array Operators

db.movies.updateOne(

{"title" :"Batman" },

{ $push :{"category" :"superhero" }})

db.movies.updateOne(

{"title" :"Batman" },

{ $pushAll :{"category" :["villain","comic-based" ]}})

db.movies.updateOne(

{"title" :"Batman" },

{ $pop :{"category" :1}})

db.movies.updateOne(

{"title" :"Batman" },

{ $pull :{"category" :"action" }})

db.movies.updateOne(

{"title" :"Batman" },

{ $pullAll :{"category" :["villain","comic-based" ]}})

The Positional $Operator

•$7is a positional operator that specifies an element in an array to update.

•Itactsasaplaceholderforthefirstelementthatmatchesthequerydocument.

•$replaces the element in the specified position with the value given.

•Example:

db.<COLLECTION>.updateOne(

{<array>:value ... },

{<update operator>:{"<array>.$" :value } }

)

7http://docs.mongodb.org/manual/reference/operator/update/postional

45

Example: The Positional $Operator

// the "action" category needs to be changed to "action-adventure"

db.movies.updateMany( { "category":"action",},

{ $set

:{"category.$" :"action-adventure" }})

Upserts

•Ifnodocumentmatchesawritequery:

–By default, nothing happens

–With upsert: true,insertsonenewdocument

*$setOnInsert will add fields only in the insert scenario

•WorksforupdateOne(),updateMany(),replaceOne()

•Syntax:

db.<COLLECTION>.updateOne( <query document>,

<update document>,

{ upsert

:true })

Upsert Mechanics

•Willupdateifdocumentsmatchingthequeryexist

•Willinsertifnodocumentsmatch

–Creates a new document using equality conditions in the querydocument

–Adds an _id if the query did not specify one

–Performs the write on the new document

•updateMany() will only create one document

–If none match, of course

Example: Upserts

db.movies.updateOne( { "title" :"Jaws" },

{ $inc

:{"budget" :5}},

{ upsert

:true })

db.movies.updateMany( { "title" :"Jaws II" },

{ $inc

:{"budget" :5}},

{ upsert

:true })

db.movies.replaceOne( { "title" :"E.T.","category" :["scifi" ]},

{"title" :"E.T.","category" :["scifi" ], "budget" :1},

{ upsert

:true })

46

save()

•Thedb.<COLLECTION>.save() method is syntactic sugar

–Similar to replaceOne(),queryingthe_id field

–Upsert if _id is not in the collection

•Syntax:

db.<COLLECTION>.save( <document>)

Example: save()

•Ifthedocumentintheargumentdoesnotcontainan_id field, then the save() method acts like

insertOne() method

–An ObjectId will be assigned to the _id field.

•Ifthedocumentintheargumentcontainsan_id field: then the save() method is equivalent to a replaceOne

with the query argument on _id and the upsert option set to true

// insert

db.movies.save( { "title" :"Beverly Hills Cops","imdb_rating" :7.3 })

// update with { upsert: true }

db.movies.save( { "_id" :1234,"title" :"Spider Man","imdb_rating" :7.3 })

Be careful with save()

Careful not to modify stale data when using save().Example:

db.movies.drop()

db.movies.insertOne( { "title" :"Jaws","imdb_rating" :7.3 })

db.movies.find( { "title" :"Jaws" })

// store the complete document in the application

doc =db.movies.findOne( { "title" :"Jaws" })

db.movies.updateOne( { "title" :"Jaws" }, { $inc:{"imdb_rating" :2}})

db.movies.find()

doc.imdb_rating =7.4

db.movies.save(doc) // just lost our incrementing of "imdb_rating"

db.movies.find()

47

findOneAndUpdate() and findOneAndReplace()

•Update(orreplace)onedocumentandreturnit

–By default, the document is returned pre-write

•Canreturnthestatebeforeoraftertheupdate

•Makesareadplusawriteatomic

•Canbeusedwithupserttoinsertadocument

findOneAndUpdate() and findOneAndReplace() Options

•Thefollowingareoptionalfieldsfortheoptionsdocument

•projection: <document> -selectthefieldstosee

•sort: <document> -sorttoselectthefirstdocument

•maxTimeoutMS: <number> -howlongtowait

–Returns an error, kills operation if exceeded

•upsert: <boolean> if true, performs an upsert

Example: findOneAndUpdate()

db.worker_queue.findOneAndUpdate(

{ state :"unprocessed" },

{ $set

:{"worker_id" :123,"state" :"processing" }},

{ upsert

:true })

findOneAndDelete()

•Notanupdateoperation,butfitsinwithfindOneAnd ...

•Returnsthedocumentanddeletesit.

•Example:

db.foo.drop();

db.foo.insertMany( [ { a :1}, { a :2}, { a :3}]);

db.foo.find(); // shows the documents.

db.foo.findOneAndDelete( { a :{ $lte :3}});

db.foo.find();

48

3.6 Lab: Updating Documents

Exercise: Pass Inspections

In the sample.inspections collection, let’s imagine that we want to do a little data cleaning.

We’ve decided to eliminate the “Completed” inspection result and use only “No Violation Issued” for such inspection

cases.

Please update all inspections accordingly.

Exercise: Set fine value

For all inspections that failed, set a fine value of 100.

Exercise: Increase fine in ROSEDALE

•Updateallinspectionsdoneinthecityof“ROSEDALE”.

•Forfailedinspections,raisethe“fine”valueby150.

Exercise: Give a pass to “MongoDB”

•TodayMongoDBgotavisitfromtheinspectors.

•Wepassed,ofcourse.

•Sogoaheadandupdate“MongoDB”andsettheresult to “AWESOME” and give a corresponding certificate.

•Theinspectormaynothaveuploadedthebasicdetailsfor“MongoDB”, so ensure the update takes place even if

“MongoDB” isn’t in the collection

•MongoDB’sinformationis

business name:MongoDB

id:10407-2017-ENFO

address:

city:New York, zip:10036, street:43, number:229

Exercise: Updating Array Elements

Insert a document representing product metrics for a backpack:

db.product_metrics.insertOne(

{ name

:"backpack",

purchasesPast7Days:[0,0,0,0,0,0,0]})

Each 0 within the “purchasesPast7Days” field corresponds to adayoftheweek. ThefirstelementisMonday,the

second element is Tuesday, etc.).

Write an update statement to increment the number of backpackssoldonFridayby200.

49

4 Indexes

Index Fundamentals (page 50) An introduction to MongoDB indexes

Lab: Basic Indexes (page 56) Ashortexerciseonthebasicofindexusage

Compound Indexes (page 57) Indexes on two or more fields

Lab: Optimizing an Index (page 62) Lab on optimizing a compound index

Multikey Indexes (page 63) Indexes on array fields

Hashed Indexes (page 67) Hashed indexes

Geospatial Indexes (page 68) Geospatial indexes: both those on legacy coordinate pairs and those supporting queries

that calculate geometries on an earth-like sphere.

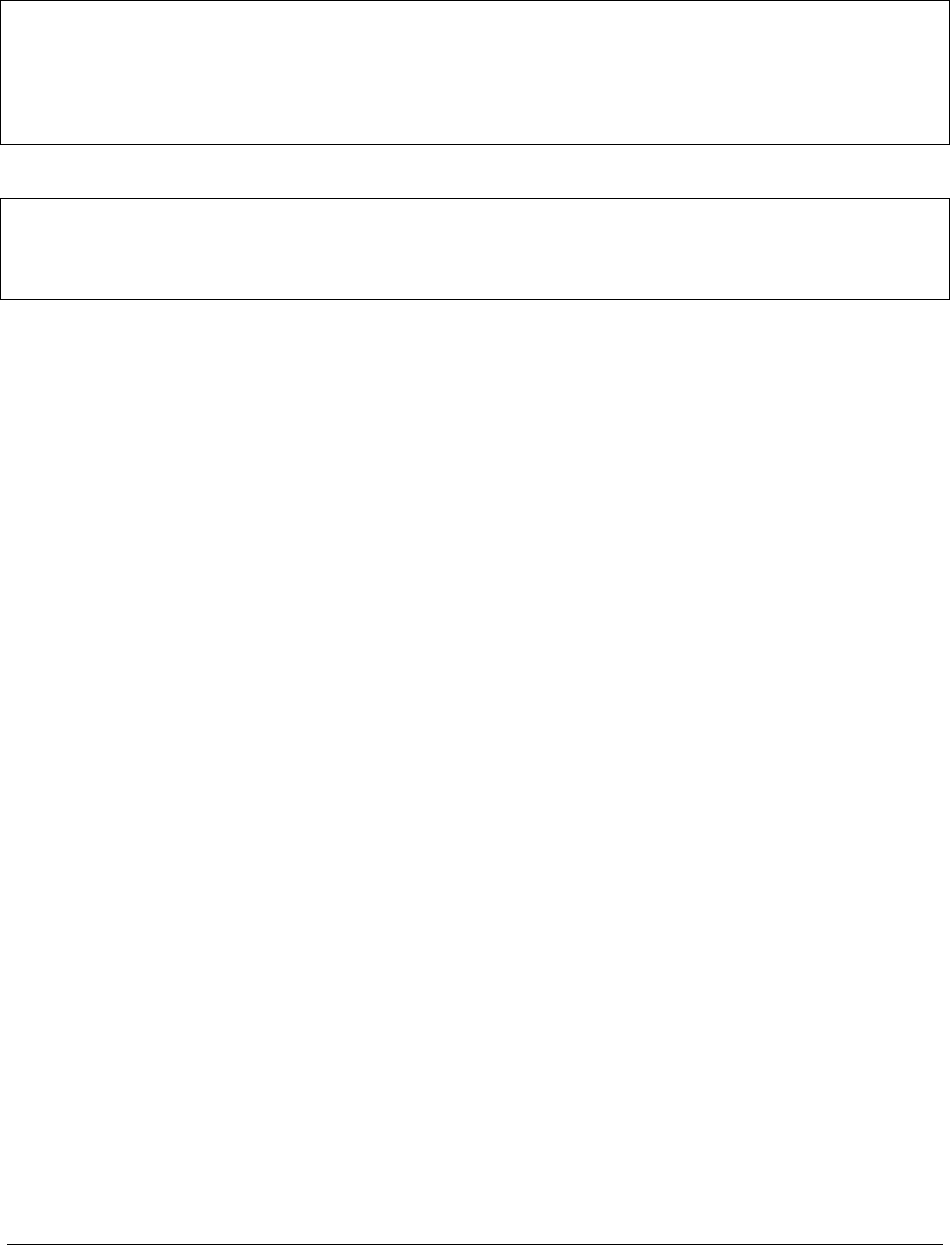

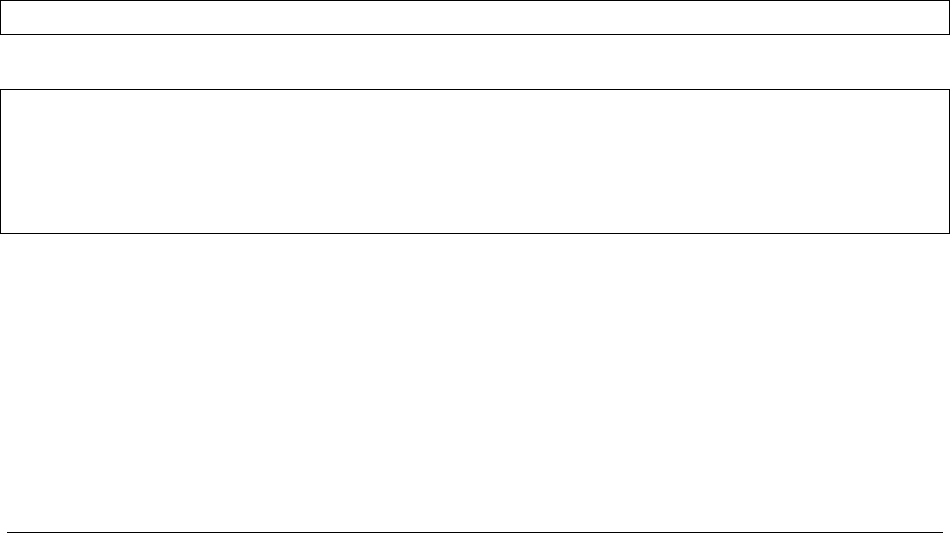

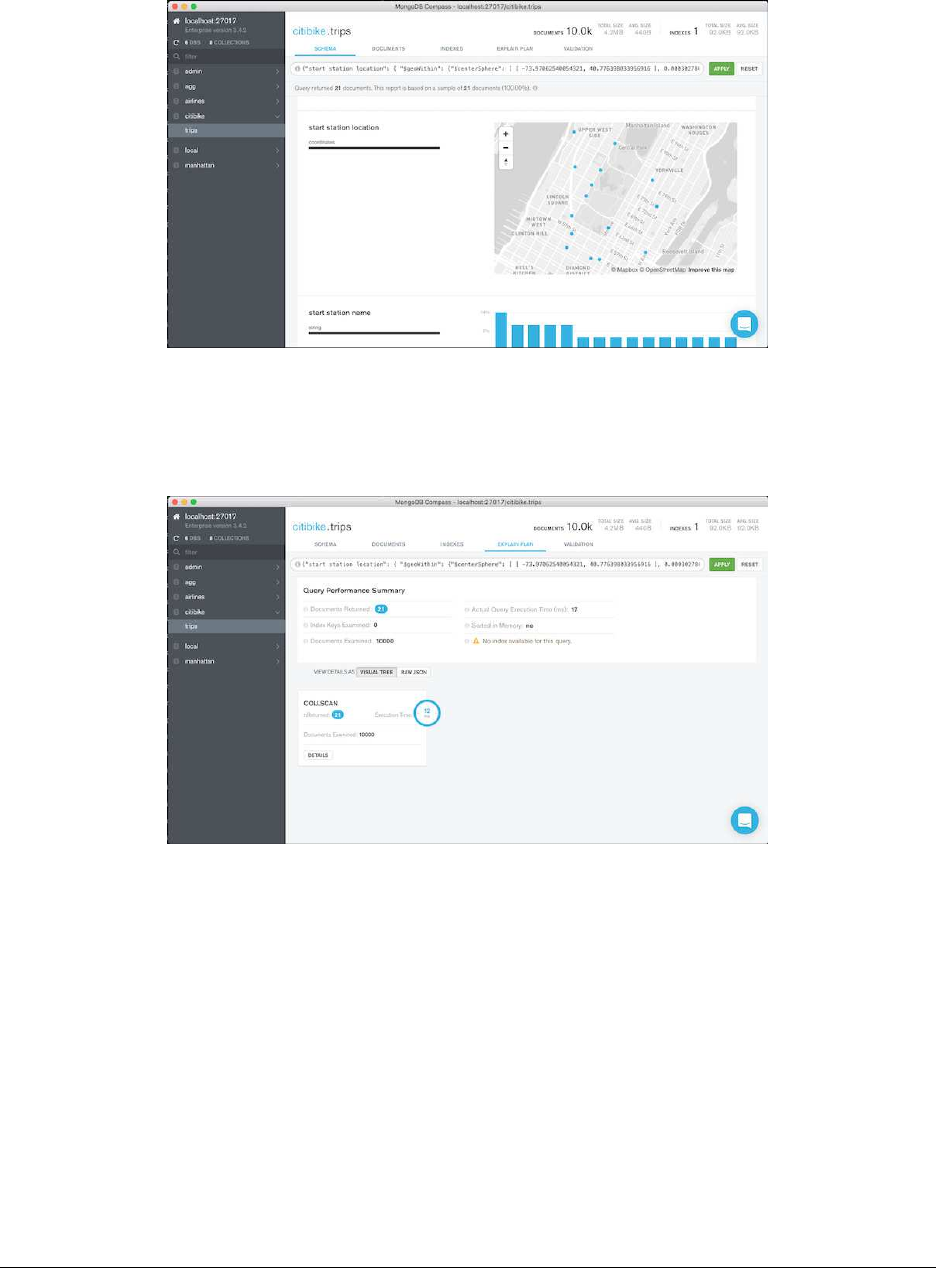

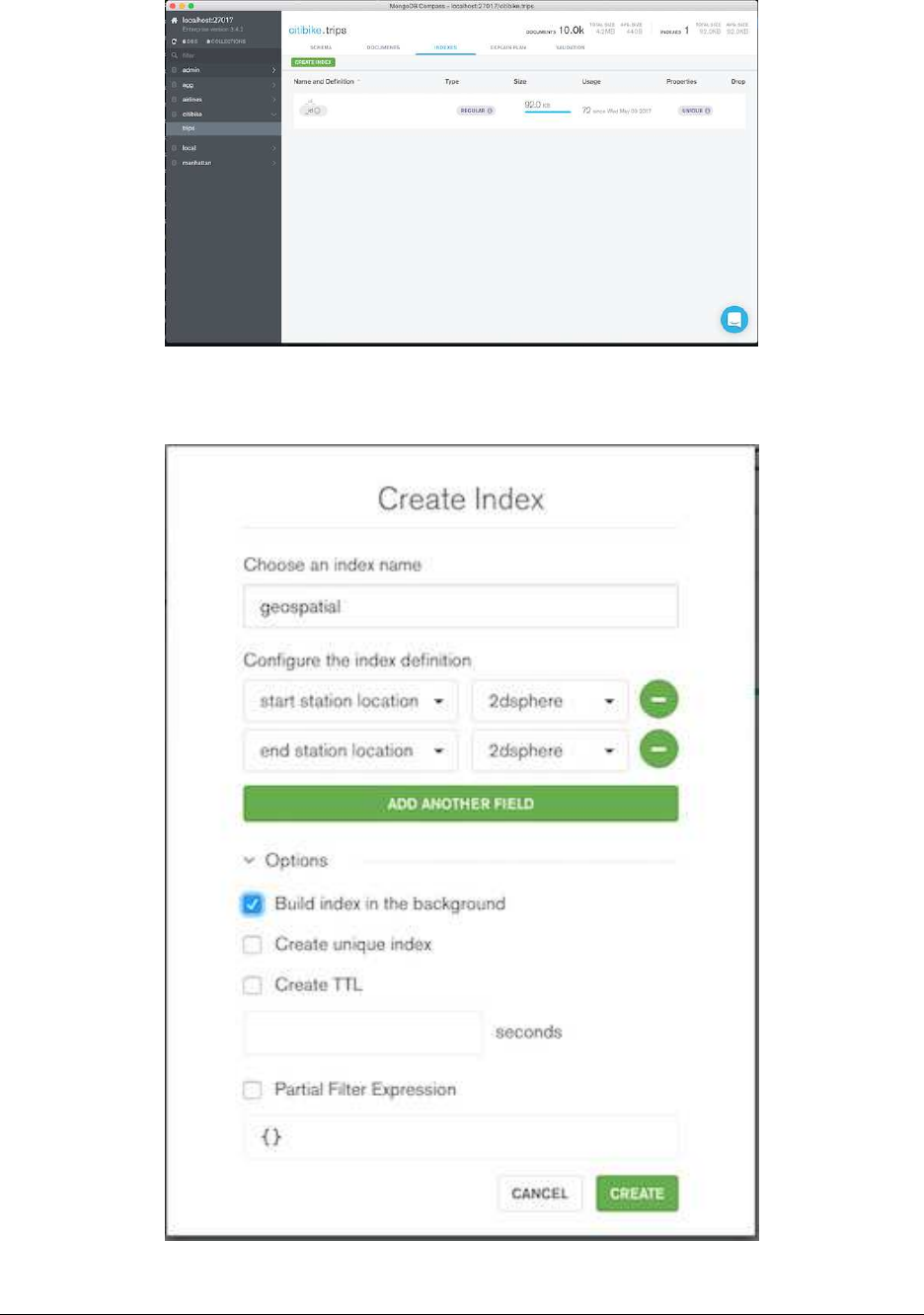

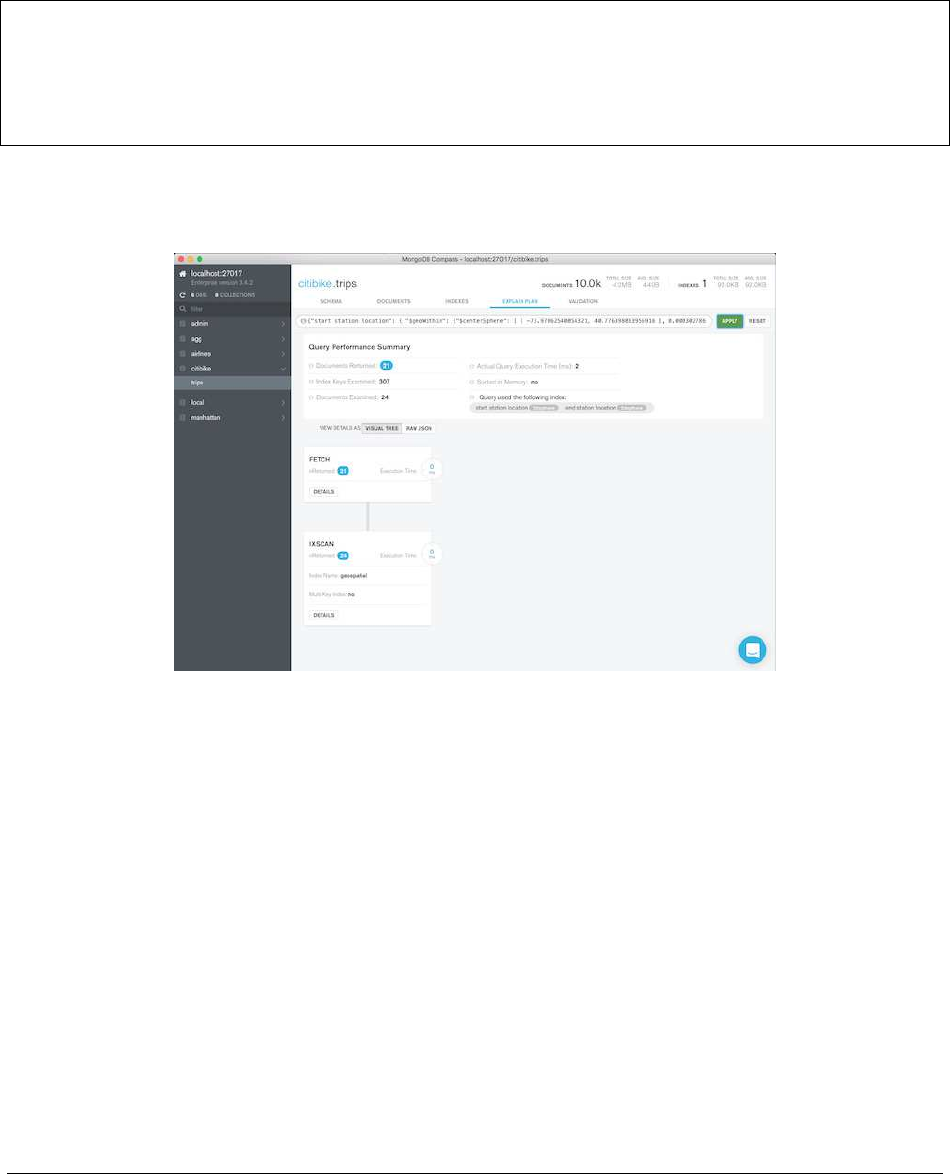

Using Compass with Indexes (page 75) Using Compass to create a geospatial index

TTL Indexes (page 79) Time-To-Live indexes

Text Inde xes (page 81) Free text indexes on string fields

Partial Indexes (page 83) Partial indexes in MongoDB

Lab: Finding and Addressing Slow Operations (page 86) Lab on finding and addressing slow queries

Lab: Using explain() (page 87) Lab on using the explain operation to review execution stats

4.1 Index Fundamentals

Learning Objectives

Upon completing this module students should understand:

•Theimpactofindexingonreadperformance

•Theimpactofindexingonwriteperformance

•Howtochooseeffectiveindexes

•Theutilityofspecificindexesforparticularquerypatterns

Why Indexes?

{

x:8.5,

...

}

{

x:5,

...

}

{

x:17,

...

}

{

x:35,

...

}

... ...

{

x:25,

...

}

9 25

17 27 35

8.5 16 26 28 33 39 55

1 2 4 5 6

3 7 8

Index on x

50

Types of Indexes

•Single-fieldindexes

•Compoundindexes

•Multikeyindexes

•Geospatialindexes

•Textindexes

Exercise: Using explain()

Let’s explore what MongoDB does for the following query by using explain().

We are projecting only user.name so that the results are easy to read.

db.tweets.find( { "user.followers_count" :1000 },

{"_id" :0,"user.name":1})

db.tweets.find( { "user.followers_count" :1000 } ).explain()

Results of explain()

With the default explain() verbosity, you will see results similar to the following:

{

"queryPlanner" :{

"plannerVersion" :1,

"namespace" :"twitter.tweets",

"indexFilterSet" :false,

"parsedQuery" :{

"user.followers_count" :{

"$eq" :1000

}

},

Results of explain() -Continued

"winningPlan" :{

"stage" :"COLLSCAN",

"filter" :{

"user.followers_count" :{

"$eq" :1000

}

},

"direction" :"forward"

},

"rejectedPlans" :[]

},

...

}

51

explain() Verbosity Can Be Adjusted

•default: determines the winning query plan but does not execute query

•executionStats: executes query and gathers statistics

•allPlansExecution: runs all candidate plans to completion and gathers statistics

explain("executionStats")

>db.tweets.find( { "user.followers_count" :1000 })

.explain("executionStats")

Now we have query statistics:

..

"executionStats" :{

"executionSuccess" :true,

"nReturned" :8,

"executionTimeMillis" :107,

"totalKeysExamined" :0,

"totalDocsExamined" :51428,

"executionStages" :{

"stage" :"COLLSCAN",

"filter" :{

"user.followers_count" :{

"$eq" :1000

}

},

explain("executionStats") -Continued

"nReturned" :8,

"executionTimeMillisEstimate" :100,

"works" :51430,

"advanced" :8,

"needTime" :51421,

"needFetch" :0,

"saveState" :401,

"restoreState" :401,

"isEOF" :1,

"invalidates" :0,

"direction" :"forward",

"docsExamined" :51428

}

...

}

52

explain("executionStats") Output

•nReturned :numberofdocumentsreturenedbythequery

•totalDocsExamined :numberofdocumentstouchedduringthequery

•totalKeysExamined :numberofindexkeysscanned

•AtotalKeysExamined or totalDocsExamined value much higher than nReturned indicates we

need a better index

•Based.explain() output, this query would benefit from a better index

Other Operations

In addition to find(),weoftenwanttouseexplain() to understand how other operations will be handled.

•aggregate()

•count()

•group()

•update()

•remove()

•findAndModify()

•insert()

db.<COLLECTION>.explain()

db.<COLLECTION>.explain() returns an ExplainableCollection.

>var explainable =db.tweets.explain()

>explainable.find( { "user.followers_count" :1000 })

equivalent to

>db.tweets.explain().find( { "user.followers_count" :1000 })

also equivalent to

>db.tweets.find( { "user.followers_count" :1000 } ).explain()

53

Using explain() for Write Operations

Simulate the number of writes that would have occurred and determine the index(es) used:

>db.tweets.explain("executionStats").remove( { "user.followers_count" :1000 })

>db.tweets.explain("executionStats").update( { "user.followers_count" :1000 },

{ $set :{"large_following" :true } }, { multi:true })

Single-Field Indexes

•Single-fieldindexesarebasedonasinglefieldofthedocuments in a collection.

•Thefieldmaybeatop-levelfield.

•Youmayalsocreateanindexonfieldsinembeddeddocuments.

Creating an Index

The following creates a single-field index on user.followers_count.

db.tweets.createIndex( { "user.followers_count" :1})

db.tweets.find( { "user.followers_count" :1000 } ).explain()

explain() indicated there will be a substantial performance improvement in handling this type of query.

Listing Indexes

List indexes for a collection:

db.tweets.getIndexes()

List index keys:

db.tweets.getIndexKeys()

Indexes and Read/Write Performance

•Indexesimprovereadperformanceforqueriesthataresupported by the index.

•InsertswillbeslowerwhenthereareindexesthatMongoDBmust also update.

•ThespeedofupdatesmaybeimprovedbecauseMongoDBwillnotneedtodoacollectionscantofindtarget

documents.

•Anindexismodifiedanytimeadocument:

–Is inserted (applies to all indexes)

–Is deleted (applies to all indexes)

–Is updated in such a way that its indexed field changes

54

Index Limitations

•Youcanhaveupto64indexespercollection.

•YoushouldNEVERbeanywhereclosetothatupperbound.

• Write performance will degrade to unusable at somewhere between 20-30.

Use Indexes with Care

•Everyqueryshoulduseanindex.

•Everyindexshouldbeusedbyaquery.

•Anywritethattouchesanindexedfieldwillupdateeveryindex that touches that field.

•IndexesrequireRAM.

•Bemindfulaboutthechoiceofkey.

Additional Index Options

•Sparse

•Unique

•Background

Sparse Indexes in MongoDB

•Sparseindexesonlycontainentriesfordocumentsthathavetheindexedfield.

db.<COLLECTION>.createIndex(

{ field_name :1},

{ sparse :true })

Defining Unique Indexes

•Enforceauniqueconstraintontheindex

–On a per-collection basis

•Can’tinsertdocumentswithaduplicatevalueforthefield

–Or update to a duplicate value

•Noduplicatevaluesmayexistpriortodefiningtheindex

db.<COLLECTION>.createIndex(

{ field_name :1},

{ unique :true })

55

Building Indexes in the Background

•Buildingindexesinforegroundisablockingoperation.

•Backgroundindexcreationisnon-blocking,however,takeslongertobuild.

•Initiallylarger,orlesscompact,thananindexbuiltintheforeground.

db.<COLLECTION>.createIndex(

{ field_name :1},

{ background :true })

4.2 Lab: Basic Indexes

Exercise: Creating a Basic Index

•Beginbyimportingtheroutescollectionfromtheusbdriveinto a running mongod process

•Youshouldimport66985

# if no mongod running

mkdir -p data/db

mongod --port 30000 --dbpath data/db --logpath data/mongod.log --append --fork

# end if no mongod running

mongoimport --drop -d airlines -c routes routes.json

Executing a Query

•Withthedocumentsinserted,performthefollowingtwoqueries, finding all routes for Delta

db.routes.find({"airline.id":2009})

db.routes.find({"airline.id":2009}).explain("executionStats")

Creating an Index

•Createanindexontheroutes collection

•Theindexshouldbeonthe"airline.id" key, in descending order

•Rerunthequerywithexplain

•Verifythatthenewlycreatedindexsupportsthequery

56

4.3 Compound Indexes

Learning Objectives

Upon completing this module students should understand:

• What a compound index is.

•Howcompoundindexesarecreated.

•Theimportanceofconsideringfieldorderwhencreatingcompound indexes.

•Howtoefficientlyhandlequeriesinvolvingsomecombination of equality matches, ranges, and sorting.

•Somelimitationsoncompoundindexes.

Introduction to Compound Indexes

•Itiscommontocreateindexesbasedonmorethanonefield.

•Thesearecalledcompound indexes.

•Youmayuseupto31fieldsinacompoundindex.

•Youmaynotusehashedindexfields.

The Order of Fields Matters

Specifically we want to consider how the index will be used for:

•Equalitytests,e.g.,

db.movies.find( { "budget" :7,"imdb_rating" :8})

•Rangequeries,e.g.,

db.movies.find( { "budget" :10,"imdb_rating" :{$lt:9}})

•Sorting,e.g.,

db.movies.find( { "budget" :10,"imdb_rating" :6}

).sort( { "imdb_rating" :-1})

57

Designing Compound Indexes

•Let’slookatsomeguidingprinciplesforbuildingcompoundindexes.

•Thesewillgenerallyproduceagoodifnotoptimalindex.

•Youcanoptimizeafteralittleexperimentation.

•Wewillexplorethisinthecontextofarunningexample.

Example: A Simple Message Board

Requirements:

•Findallmessagesinaspecifiedtimestamprange.

•Selectforwhetherthemessagesareanonymousornot.

•Sortbyratingfromhighesttolowest.

Load the Data

a=[{"timestamp" :1,"username" :"anonymous","rating" :3},

{"timestamp" :2,"username" :"anonymous","rating" :5},

{"timestamp" :3,"username" :"sam","rating" :1},

{"timestamp" :4,"username" :"anonymous","rating" :2},

{"timestamp" :5,"username" :"martha","rating" :5}]

db.messages.insertMany(a)

Start with a Simple Index

Start by building an index on { timestamp : 1 }

db.messages.createIndex( { timestamp :1}, { name :"myindex" })

Now let’s query for messages with timestamp in the range 2 through 4 inclusive.

db.messages.find( { timestamp :{ $gte :2, $lte :4} } ).explain("executionStats")

Analysis:

•Explainplanshowsgoodperformance,i.e.totalKeysExamined =n.

•However,thisdoesnotsatisfyourquery.

•Needtoqueryagainwith{username: "anonymous"} as part of the query.

58

Query Adding username

Let’s add the user field to our query.

db.messages.find( { timestamp :{ $gte :2, $lte :4},

username :"anonymous" } ).explain("executionStats")

totalKeysExamined >n.

Include username in Our Index

db.messages.dropIndex( "myindex" );

db.messages.createIndex( { timestamp :1, username :1},

{ name :"myindex" })

db.messages.find( { timestamp :{ $gte :2, $lte :4},

username :"anonymous" } ).explain("executionStats")

totalKeysExamined is still > n. Why?

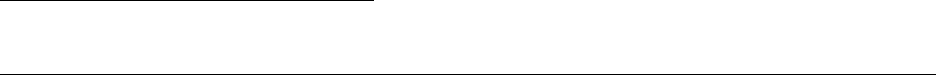

totalKeysExamined >n

timestamp username

1“anonymous”

2“anonymous”

3“sam”

4“anonymous”

5“martha”

ADifferentCompoundIndex

Drop the index and build a new one with user.

db.messages.dropIndex( "myindex" );

db.messages.createIndex( { username :1, timestamp :1},

{ name :"myindex" })

db.messages.find( { timestamp :{ $gte :2, $lte :4},

username :"anonymous" } ).explain("executionStats")

totalKeysExamined is 2. nis 2.

59

totalKeysExamined == n

username timestamp

“anonymous” 1

“anonymous” 2

“anonymous” 4

“sam” 2

“martha” 5

Let Selectivity Drive Field Order

•Orderfieldsinacompoundindexfrommostselectivetoleastselective.

•Usually,thismeansequalityfieldsbeforerangefields.

• When dealing with multiple equality values, start with the most selective.

•Ifacommonrangequeryismoreselectiveinstead(rare),specify the range component first.

Adding in the Sort

Finally, let’s add the sort and run the query

db.messages.find( {

timestamp :{ $gte :2, $lte :4},

username :"anonymous"

} ).sort( { rating :-1} ).explain("executionStats");

•NotethatthewinningPlan includes a SORT stage

•ThismeansthatMongoDBhadtoperformasortinmemory

•Inmemorysortsoncandegradeperformancesignificantly

–Especially if used frequently

–In-memory sorts that use > 32 MB will abort

In-Memory Sorts

Let’s modify the index again to allow the database to sort for us.

db.messages.dropIndex( "myindex" );

db.messages.createIndex( { username :1, timestamp :1, rating :1},

{ name :"myindex" });

db.messages.find( {

timestamp :{ $gte :2, $lte :4},

username :"anonymous"

} ).sort( { rating :-1} ).explain("executionStats");

•Theexplainplanremainsunchanged,becausethesortfieldcomes after the range fields.

•Theindexdoesnotstoreentriesinorderbyrating.

•Notethatthisrequiresustoconsideratradeoff.

60

Avoiding an In-Memory Sort

Rebuild the index as follows.

db.messages.dropIndex( "myindex" );

db.messages.createIndex( { username :1, rating :1, timestamp :1},

{ name :"myindex" });

db.messages.find( {

timestamp :{ $gte :2, $lte :4},

username :"anonymous"

} ).sort( { rating :-1} ).explain("executionStats");

•Wenolongerhaveanin-memorysort,butneedtoexaminemorekeys.

•totalKeysExamined is 3 and and nis 2.

•Thisisthebestwecandointhissituationandthisisfine.

•However,iftotalKeysExamined is much larger than n,thismightnotbethebestindex.

No need for stage : SORT

username rating timestamp

“anonymous” 2 4

“anonymous” 3 1

“anonymous” 5 2

“sam” 1 2

“martha” 5 5

General Rules of Thumb

•Equalitybeforerange

•Equalitybeforesorting

•Sortingbeforerange

Covered Queries

• When a query and projection include only the indexed fields, MongoDB will return results directly from the

index.

•Thereisnoneedtoscananydocumentsorbringdocumentsintomemory.

61

•Thesecoveredqueriescanbeveryefficient.

Exercise: Covered Queries

db.testcol.drop()

for (i=1;i<=20;i++){

db.testcol.insertOne({ "_id" :i, "title" :i, "name" :i,

"rating" :i, "budget" :i})

};

db.testcol.createIndex( { "title" :1,"name" :1,"rating" :1})

// Not covered because _id is present.

db.testcol.find( { "title" :3},

{"title" :1,"name" :1,"rating" :1}

).explain("executionStats")

// Not covered because other fields may exist in matching docs.

db.testcol.find( { "title" :3},

{"_id" :0,"budget" :0} ).explain("executionStats")

// Covered query!

db.testcol.find( { "title" :3},

{"_id" :0,"title" :1,"name" :1,"rating" :1}

).explain("executionStats")

4.4 Lab: Optimizing an Index

Exercise: What Index Do We Need?

Run the the following Javascript file from the handouts.

mongo --shell localhost/performance performance.js

In the shell that launches execute the following method

performance.init()

The method above will build a sample data set in the “sensor_readings” collection. What index is needed for this

query?

db.sensor_readings.find( { tstamp:{ $gte:ISODate("2012-08-01"),

$lte:ISODate("2012-09-01")},

active:true } ).limit(3)

62

Exercise: Avoiding an In-Memory Sort

What index is needed for the following query to avoid an in-memory sort?

db.sensor_readings.find( { active:true } ).sort( { tstamp :-1})

Exercise: Avoiding an In-Memory Sort, 2

What index is needed for the following query to avoid an in-memory sort?

db.sensor_readings.find(

{x:{$in:[100,200,300,400]}}

).sort( { tstamp :-1})

4.5 Multikey Indexes

Learning Objectives

Upon completing this module, students should understand:

• What a multikey index is

• When MongoDB will use a multikey index to satisfy a query

•Howmultikeyindexeswork

•Howmultikeyindexeshandlesorting

•Somelimitationsonmultikeyindexes

Introduction to Multikey Indexes

•Amultikeyindexisanindexonanarray.

•Anindexentryiscreatedoneachvaluefoundinthearray.

•Multikeyindexescansupportprimitives,documents,orsub-arrays.

•Thereisnothingspecialthatyouneedtodotocreateamultikey index.

•YoucreatethemusingcreateIndex() just as you would with an ordinary single-field index.

•Ifthereisanarrayasavalueforanindexedfield,theindexwill be multikey on that field.

63

Example: Array of Numbers

db.race_results.drop()

db.race_results.createIndex( { "lap_times" :1})

a=[{"lap_times" :[3,5,2,8]},

{"lap_times" :[1,6,4,2]},

{"lap_times" :[6,3,3,8]}]

db.race_results.insertMany( a )

// Used the index

db.race_results.find( { lap_times :1} ).explain()

// One document found.

// Index not used, because it is naive to position.

db.race_results.find( { "lap_times.2" :3} ).explain()

Exercise: Array of Documents, Part 1

Create a collection and add an index on the comments.rating field:

db.blog.drop()

b=[{"comments" :[

{"name" :"Bob","rating" :1},

{"name" :"Frank","rating" :5.3 },

{"name" :"Susan","rating" :3}]},

{"comments" :[

{ name :"Megan","rating" :1}]},

{"comments" :[

{"name" :"Luke","rating" :1.4 },

{"name" :"Matt","rating" :5},

{"name" :"Sue","rating" :7}]}]

db.blog.insertMany(b)

db.blog.createIndex( { "comments" :1})

// vs

db.blog.createIndex( { "comments.rating" :1})

// for this query

db.blog.find( { "comments.rating" :5})

Exercise: Array of Documents, Part 2

For each of the three queries below:

•Howmanydocumentswillbereturned?

• Will it use our multi-key index? Why or why not?

•Ifaquerywillnotusetheindex,whichindexwillituse?

db.blog.find( { "comments" :{"name" :"Bob","rating" :1}})

db.blog.find( { "comments" :{"rating" :1}})

db.blog.find( { "comments.rating" :1})

64

Exercise: Array of Arrays, Part 1

Add some documents and create an index simulating a player in agamemovingonanX,Ygrid.

db.player.drop()

db.player.createIndex( { "last_moves" :1})

c=[{"last_moves" :[[1,2], [ 2,3], [ 3,4]]},

{"last_moves" :[[3,4], [ 4,5]]},

{"last_moves" :[[4,5], [ 5,6]]},

{"last_moves" :[[3,4]]},

{"last_moves" :[[4,5]]}]

db.player.insertMany(c)

db.player.find()

Exercise: Array of Arrays, Part 2