Neural Network User Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 558 [warning: Documents this large are best viewed by clicking the View PDF Link!]

Neural Network Toolbox™

User's Guide

Mark Hudson Beale

Martin T. Hagan

Howard B. Demuth

R2018a

How to Contact MathWorks

Latest news: www.mathworks.com

Sales and services: www.mathworks.com/sales_and_services

User community: www.mathworks.com/matlabcentral

Technical support: www.mathworks.com/support/contact_us

Phone: 508-647-7000

The MathWorks, Inc.

3 Apple Hill Drive

Natick, MA 01760-2098

Neural Network Toolbox

™

User's Guide

© COPYRIGHT 1992–2018 by The MathWorks, Inc.

The software described in this document is furnished under a license agreement. The software may be used

or copied only under the terms of the license agreement. No part of this manual may be photocopied or

reproduced in any form without prior written consent from The MathWorks, Inc.

FEDERAL ACQUISITION: This provision applies to all acquisitions of the Program and Documentation by,

for, or through the federal government of the United States. By accepting delivery of the Program or

Documentation, the government hereby agrees that this software or documentation qualies as commercial

computer software or commercial computer software documentation as such terms are used or dened in

FAR 12.212, DFARS Part 227.72, and DFARS 252.227-7014. Accordingly, the terms and conditions of this

Agreement and only those rights specied in this Agreement, shall pertain to and govern the use,

modication, reproduction, release, performance, display, and disclosure of the Program and

Documentation by the federal government (or other entity acquiring for or through the federal government)

and shall supersede any conicting contractual terms or conditions. If this License fails to meet the

government's needs or is inconsistent in any respect with federal procurement law, the government agrees

to return the Program and Documentation, unused, to The MathWorks, Inc.

Trademarks

MATLAB and Simulink are registered trademarks of The MathWorks, Inc. See

www.mathworks.com/trademarks for a list of additional trademarks. Other product or brand

names may be trademarks or registered trademarks of their respective holders.

Patents

MathWorks products are protected by one or more U.S. patents. Please see

www.mathworks.com/patents for more information.

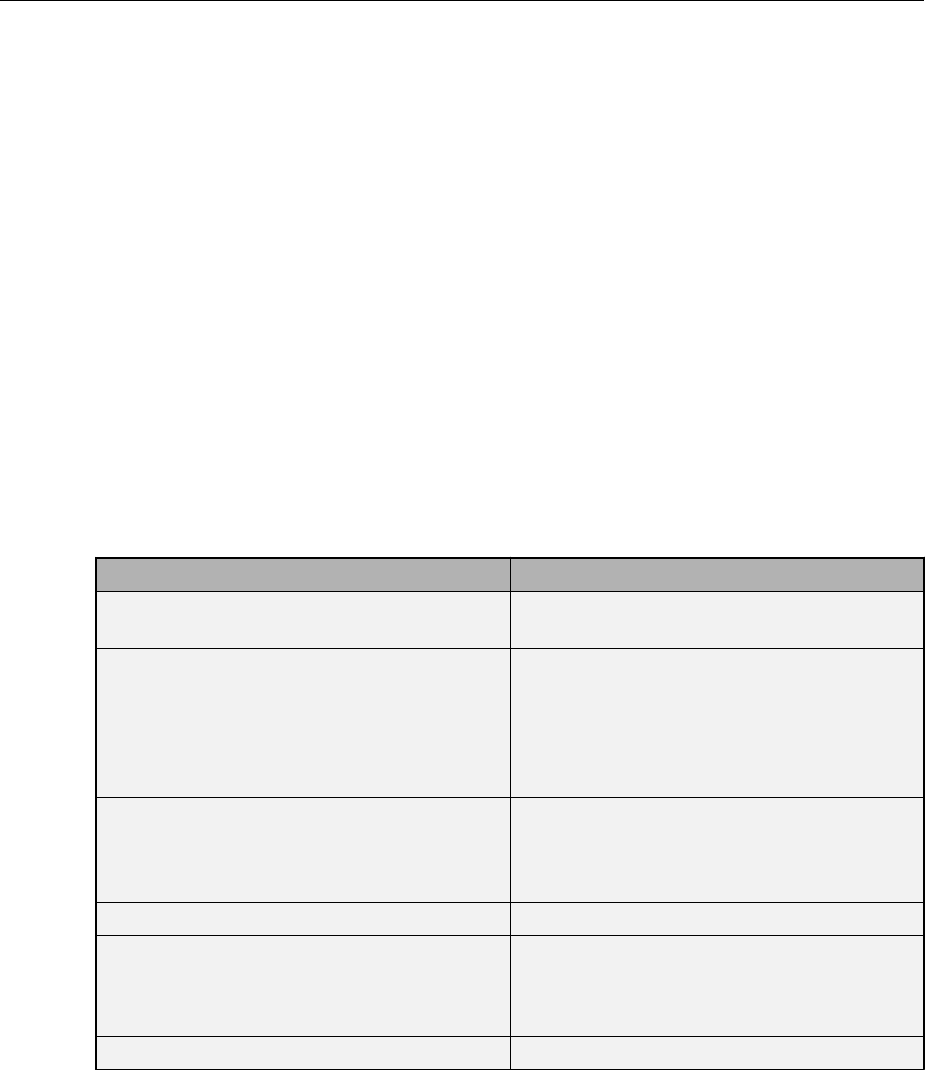

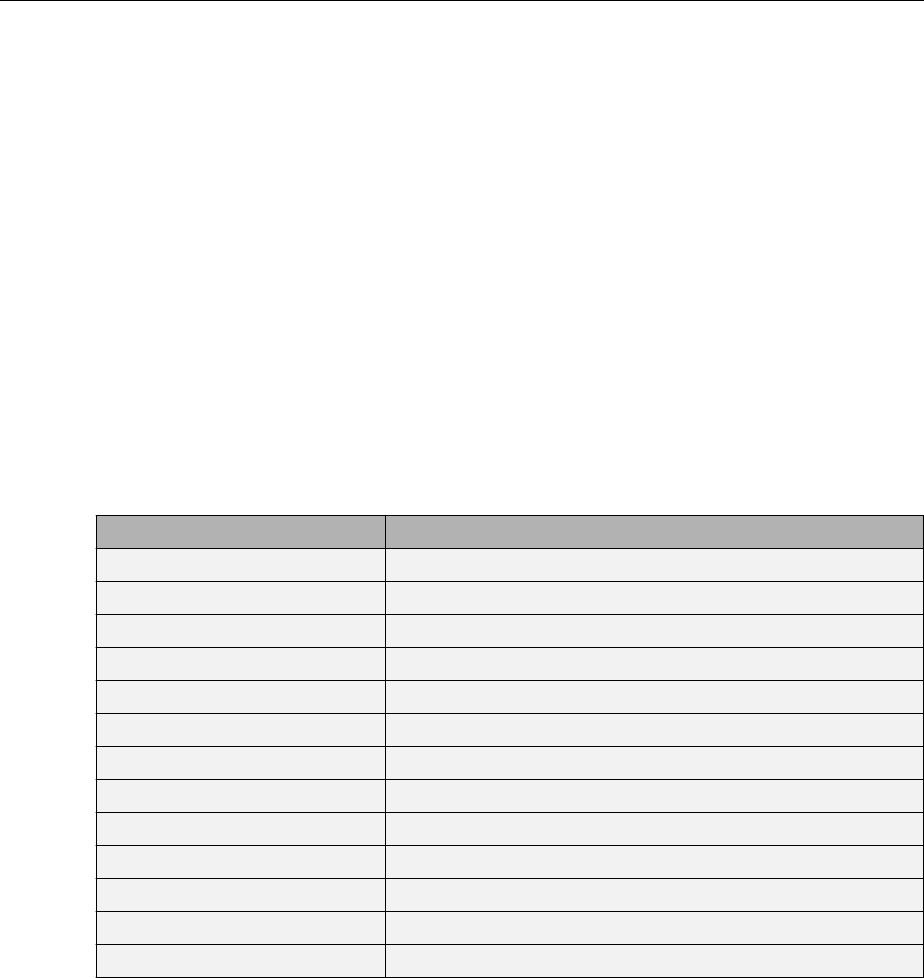

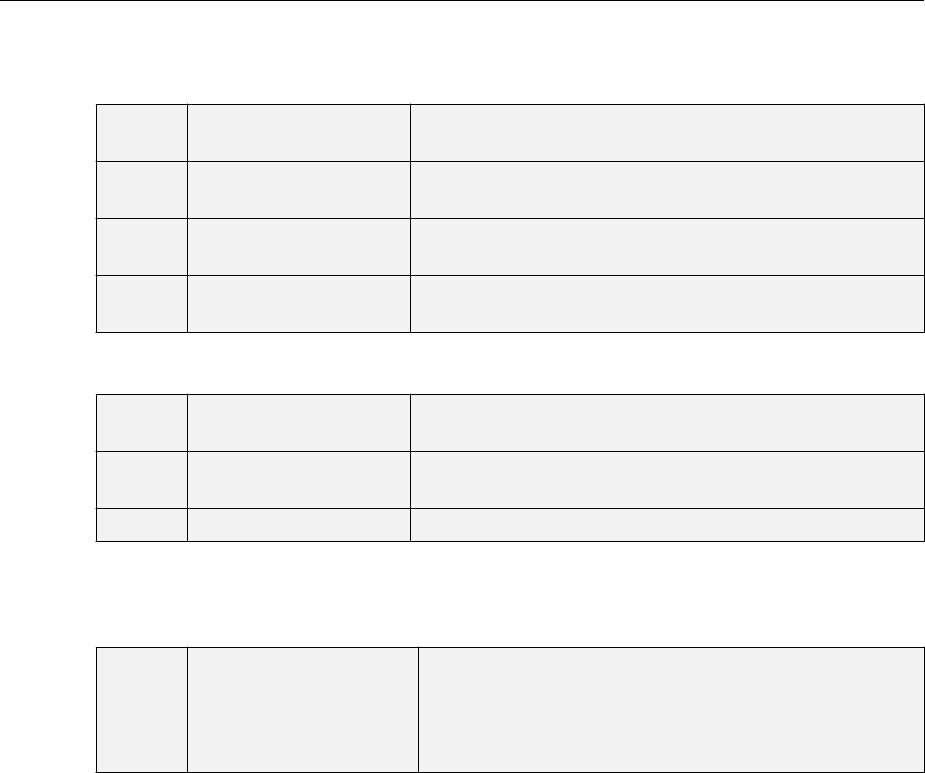

Revision History

June 1992 First printing

April 1993 Second printing

January 1997 Third printing

July 1997 Fourth printing

January 1998 Fifth printing Revised for Version 3 (Release 11)

September 2000 Sixth printing Revised for Version 4 (Release 12)

June 2001 Seventh printing Minor revisions (Release 12.1)

July 2002 Online only Minor revisions (Release 13)

January 2003 Online only Minor revisions (Release 13SP1)

June 2004 Online only Revised for Version 4.0.3 (Release 14)

October 2004 Online only Revised for Version 4.0.4 (Release 14SP1)

October 2004 Eighth printing Revised for Version 4.0.4

March 2005 Online only Revised for Version 4.0.5 (Release 14SP2)

March 2006 Online only Revised for Version 5.0 (Release 2006a)

September 2006 Ninth printing Minor revisions (Release 2006b)

March 2007 Online only Minor revisions (Release 2007a)

September 2007 Online only Revised for Version 5.1 (Release 2007b)

March 2008 Online only Revised for Version 6.0 (Release 2008a)

October 2008 Online only Revised for Version 6.0.1 (Release 2008b)

March 2009 Online only Revised for Version 6.0.2 (Release 2009a)

September 2009 Online only Revised for Version 6.0.3 (Release 2009b)

March 2010 Online only Revised for Version 6.0.4 (Release 2010a)

September 2010 Online only Revised for Version 7.0 (Release 2010b)

April 2011 Online only Revised for Version 7.0.1 (Release 2011a)

September 2011 Online only Revised for Version 7.0.2 (Release 2011b)

March 2012 Online only Revised for Version 7.0.3 (Release 2012a)

September 2012 Online only Revised for Version 8.0 (Release 2012b)

March 2013 Online only Revised for Version 8.0.1 (Release 2013a)

September 2013 Online only Revised for Version 8.1 (Release 2013b)

March 2014 Online only Revised for Version 8.2 (Release 2014a)

October 2014 Online only Revised for Version 8.2.1 (Release 2014b)

March 2015 Online only Revised for Version 8.3 (Release 2015a)

September 2015 Online only Revised for Version 8.4 (Release 2015b)

March 2016 Online only Revised for Version 9.0 (Release 2016a)

September 2016 Online only Revised for Version 9.1 (Release 2016b)

March 2017 Online only Revised for Version 10.0 (Release 2017a)

September 2017 Online only Revised for Version 11.0 (Release 2017b)

March 2018 Online only Revised for Version 11.1 (Release 2018a)

Neural Network Toolbox Design Book

Neural Network Objects, Data, and Training Styles

1

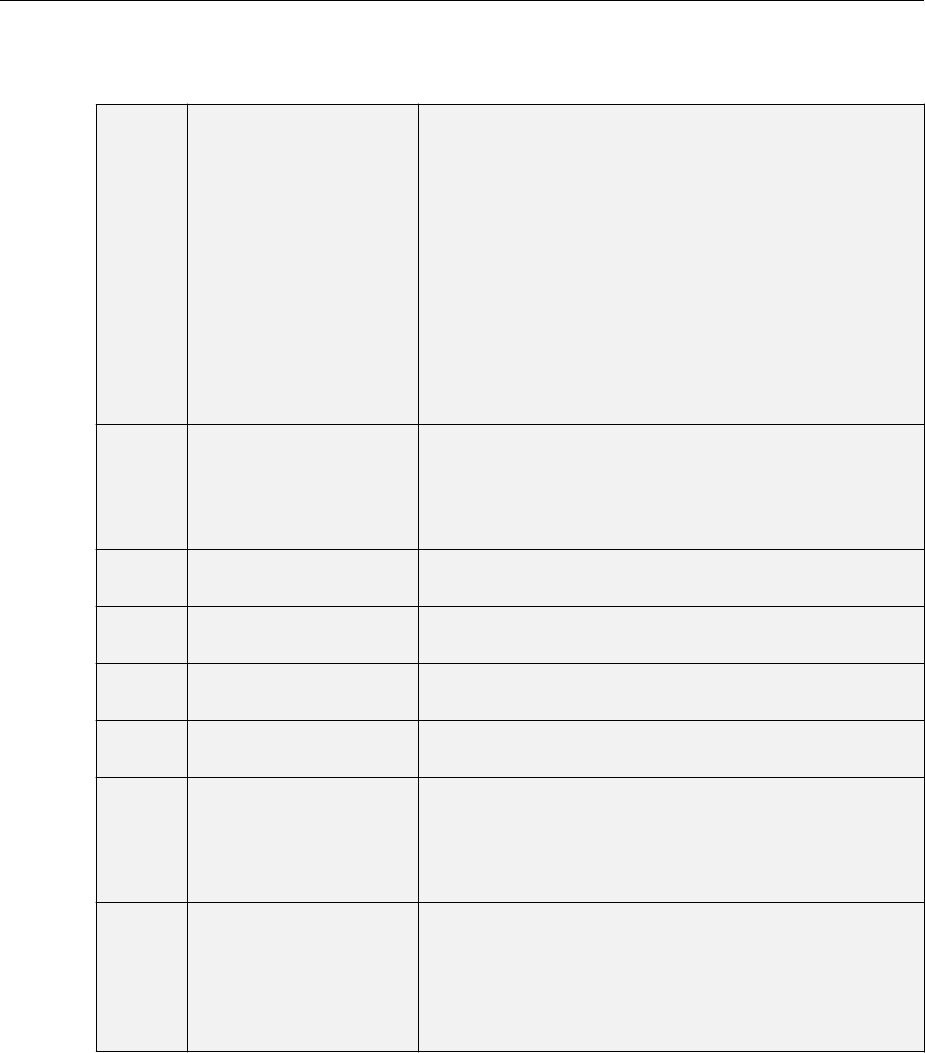

Workow for Neural Network Design .................... 1-2

Four Levels of Neural Network Design ................... 1-4

Neuron Model ....................................... 1-5

Simple Neuron .................................... 1-5

Transfer Functions ................................. 1-6

Neuron with Vector Input ............................ 1-7

Neural Network Architectures ......................... 1-11

One Layer of Neurons .............................. 1-11

Multiple Layers of Neurons ......................... 1-13

Input and Output Processing Functions ................ 1-15

Create Neural Network Object ......................... 1-17

Congure Neural Network Inputs and Outputs ........... 1-21

Understanding Neural Network Toolbox Data Structures ... 1-23

Simulation with Concurrent Inputs in a Static Network ..... 1-23

Simulation with Sequential Inputs in a Dynamic Network ... 1-24

Simulation with Concurrent Inputs in a Dynamic Network .. 1-26

Neural Network Training Concepts ..................... 1-28

Incremental Training with adapt ...................... 1-28

Batch Training ................................... 1-31

v

Contents

Training Feedback ................................ 1-34

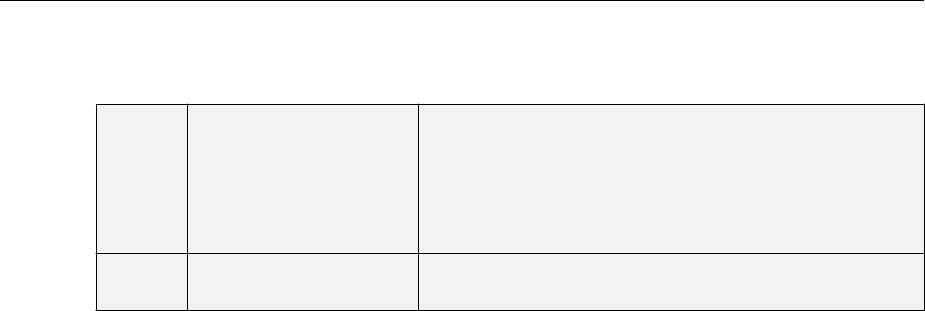

Deep Networks

2

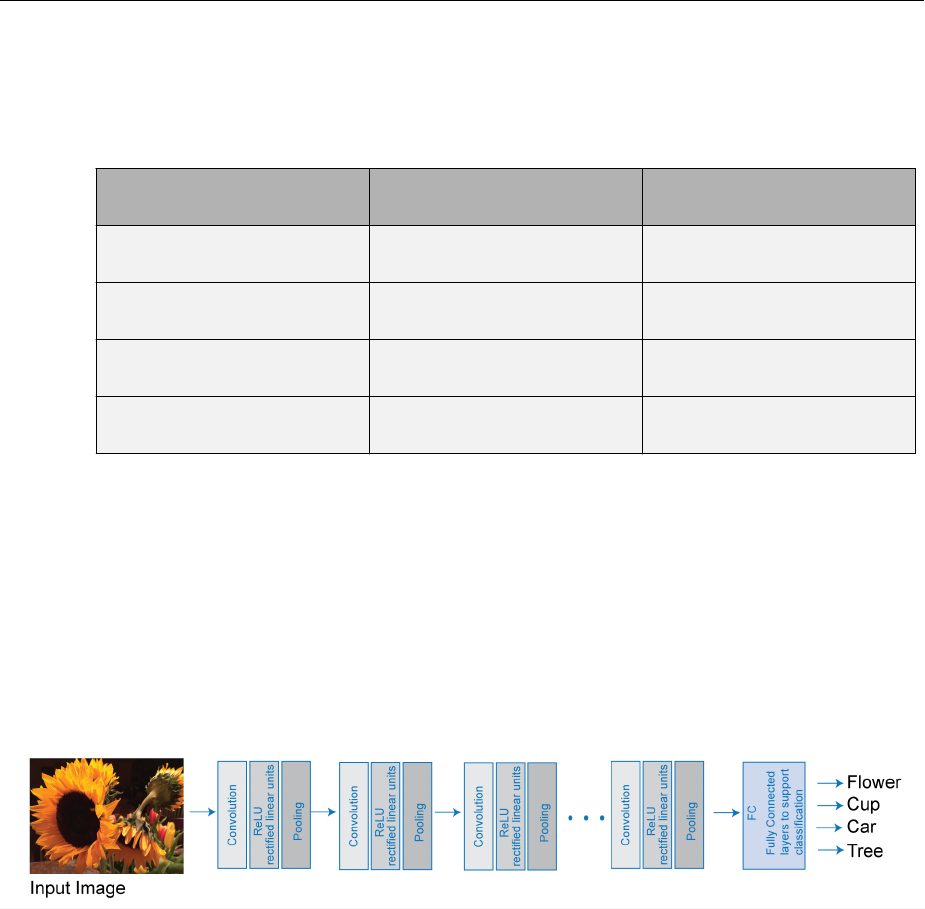

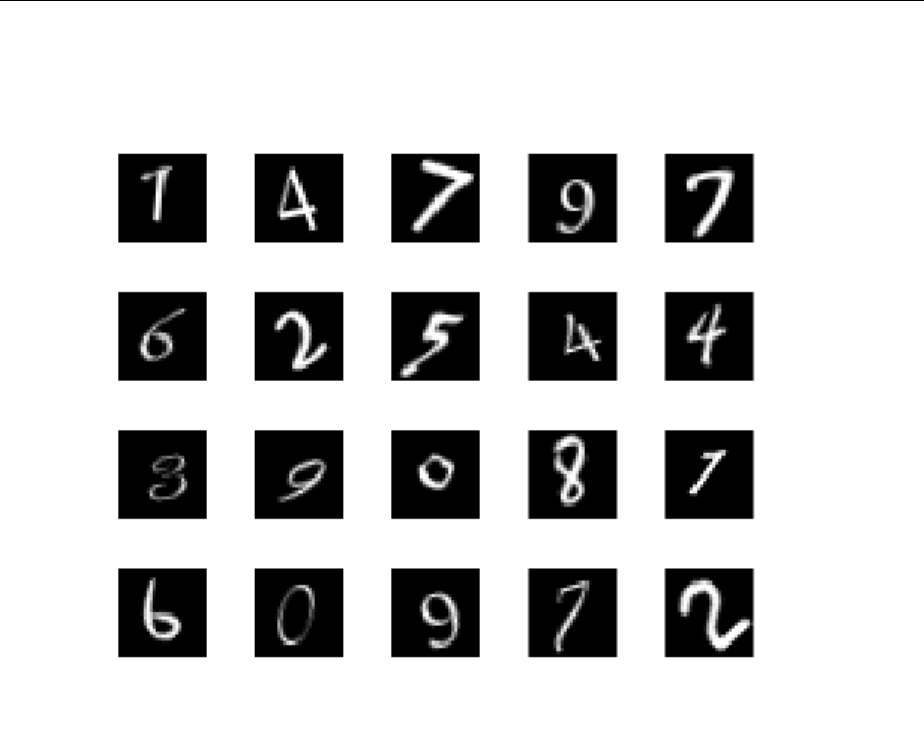

Deep Learning in MATLAB ............................. 2-2

What Is Deep Learning? ............................. 2-2

Try Deep Learning in 10 Lines of MATLAB Code ........... 2-5

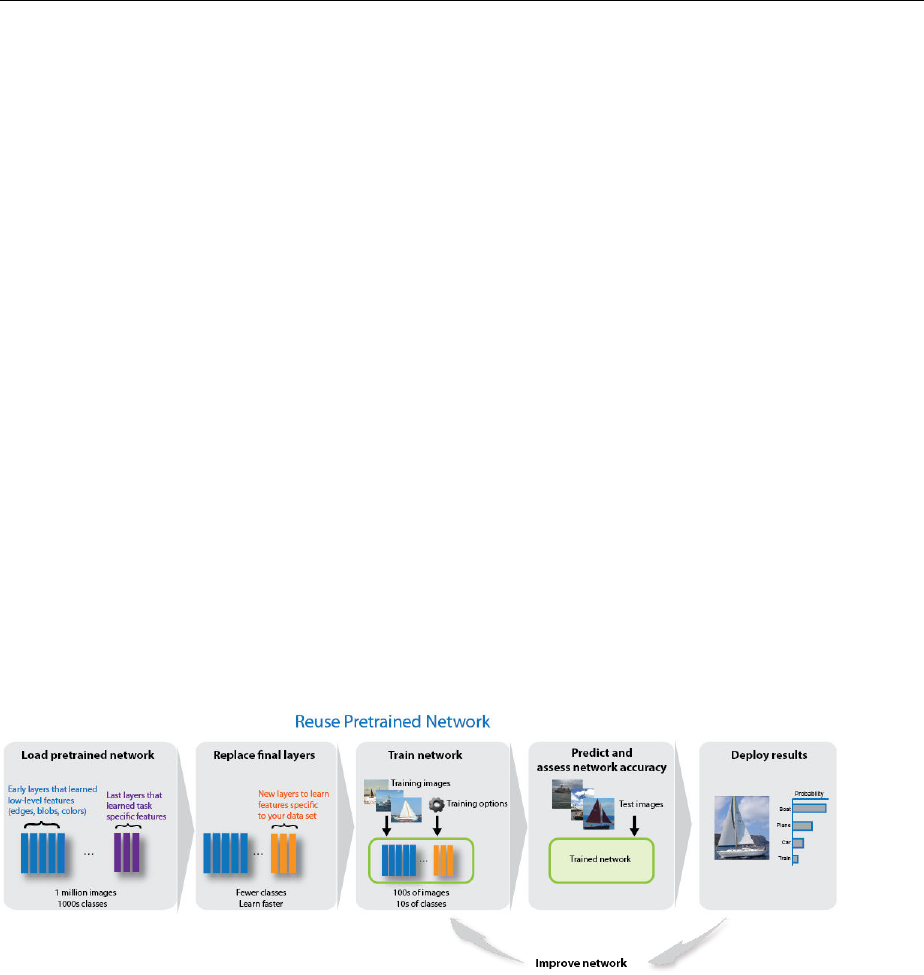

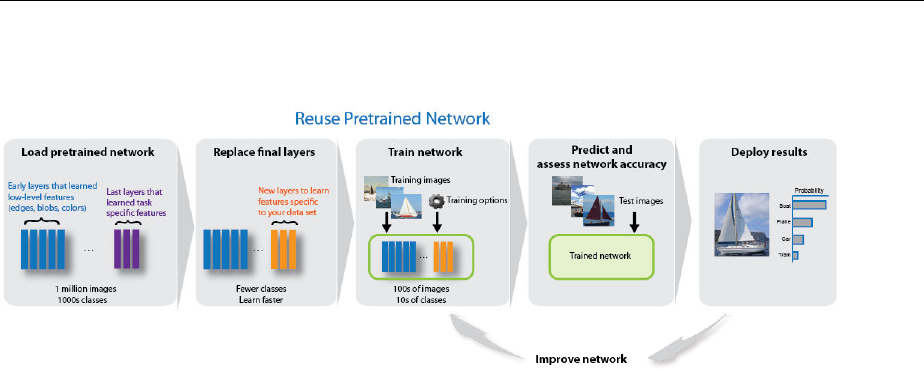

Start Deep Learning Faster Using Transfer Learning ....... 2-7

Train Classiers Using Features Extracted from

Pretrained Networks ............................. 2-8

Deep Learning with Big Data on CPUs, GPUs, in Parallel, and on

the Cloud ...................................... 2-8

Try Deep Learning in 10 Lines of MATLAB Code .......... 2-10

Deep Learning with Big Data on GPUs and in Parallel ..... 2-13

Training with Multiple GPUs ......................... 2-15

Fetch and Preprocess Data in Background .............. 2-16

Deep Learning in the Cloud ......................... 2-17

Construct Deep Network Using Autoencoders ............ 2-18

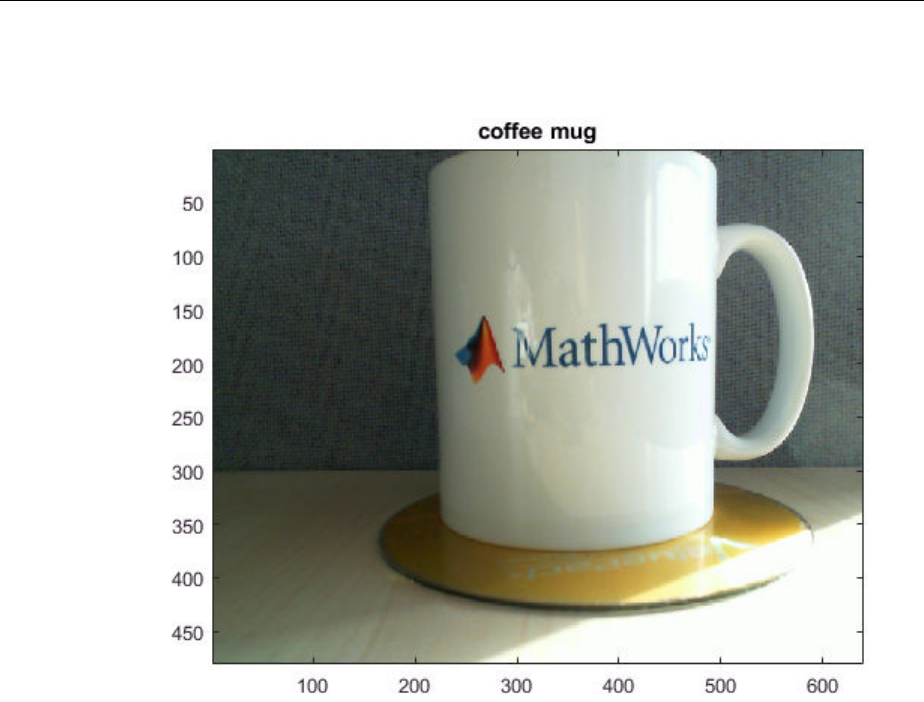

Pretrained Convolutional Neural Networks .............. 2-21

Download Pretrained Networks ...................... 2-22

Transfer Learning ................................. 2-24

Feature Extraction ................................ 2-25

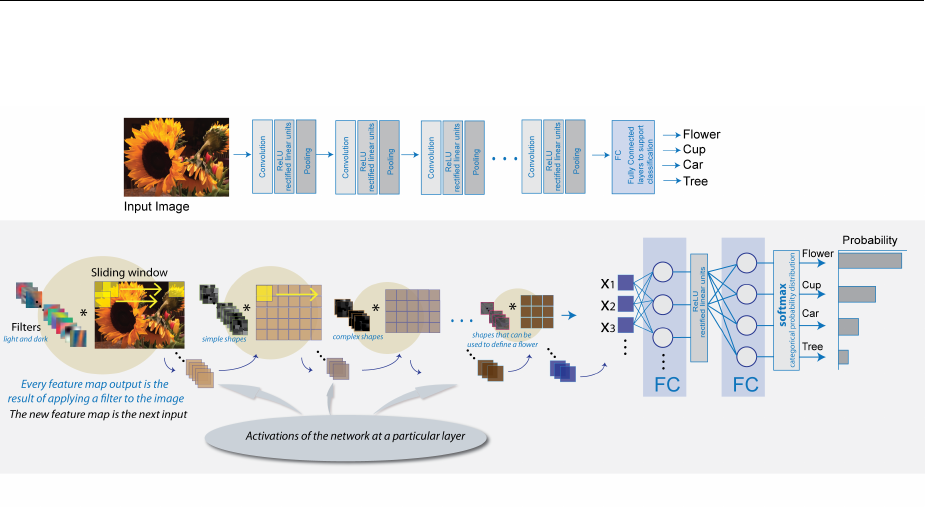

Learn About Convolutional Neural Networks ............. 2-27

List of Deep Learning Layers .......................... 2-31

Specify Layers of Convolutional Neural Network .......... 2-36

Image Input Layer ................................ 2-37

Convolutional Layer ............................... 2-37

Batch Normalization Layer .......................... 2-39

ReLU Layer ..................................... 2-39

Cross Channel Normalization (Local Response Normalization)

Layer ........................................ 2-40

Max- and Average-Pooling Layers ..................... 2-40

vi Contents

Dropout Layer ................................... 2-41

Fully Connected Layer ............................. 2-41

Output Layers ................................... 2-42

Set Up Parameters and Train Convolutional Neural

Network ......................................... 2-46

Specify Solver and Maximum Number of Epochs ......... 2-46

Specify and Modify Learning Rate .................... 2-47

Specify Validation Data ............................. 2-48

Select Hardware Resource .......................... 2-48

Save Checkpoint Networks and Resume Training ......... 2-49

Set Up Parameters in Convolutional and Fully Connected

Layers ....................................... 2-49

Train Your Network ............................... 2-50

Resume Training from a Checkpoint Network ............ 2-51

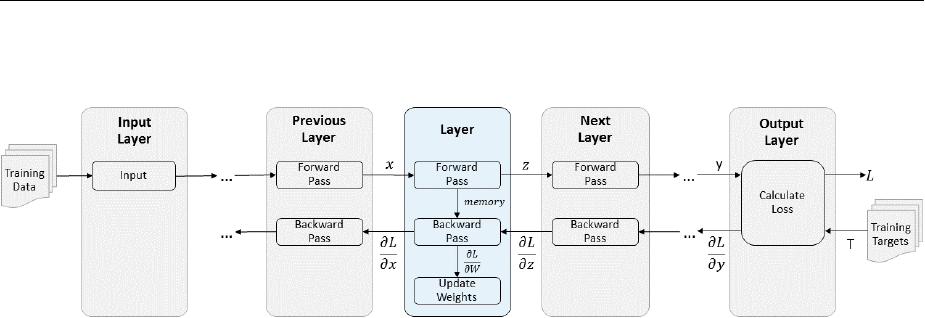

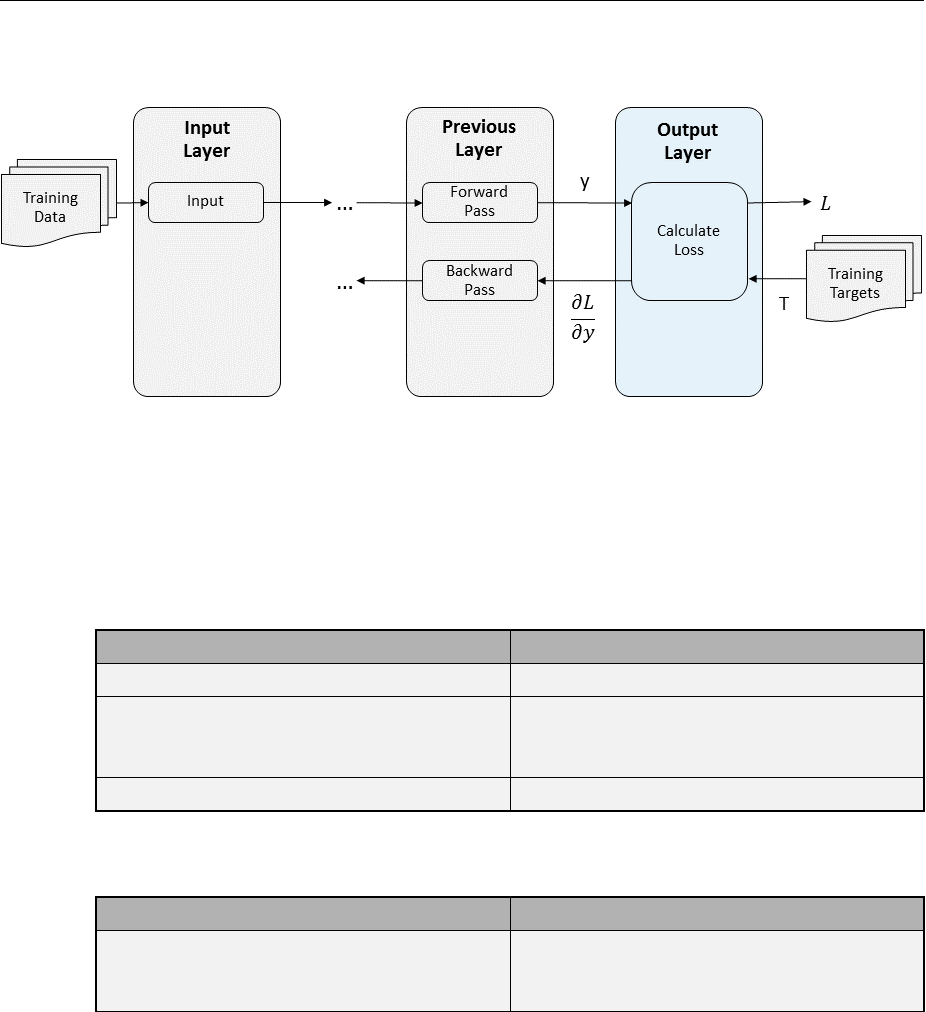

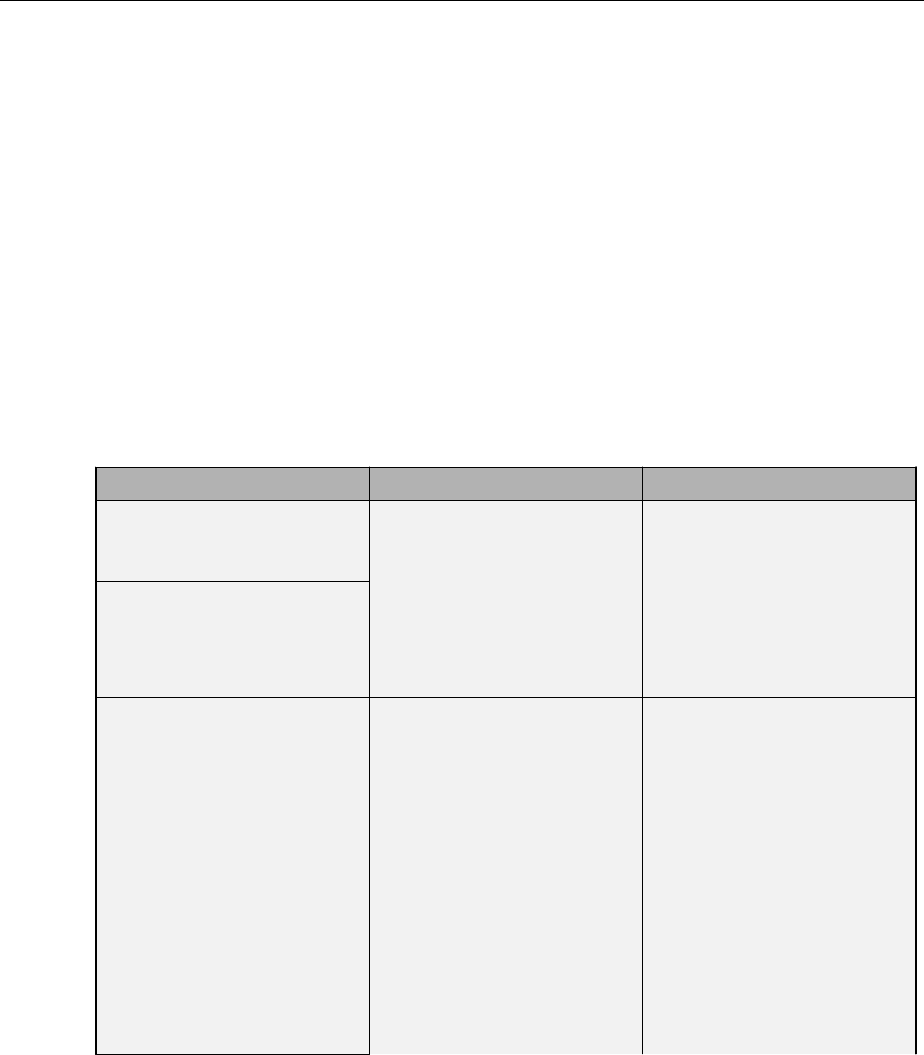

Dene Custom Deep Learning Layers ................... 2-56

Layer Templates .................................. 2-57

Layer Architecture ................................ 2-60

Check Validity of Layer ............................. 2-64

Output Layer Architecture .......................... 2-66

Dene a Custom Deep Learning Layer with Learnable

Parameters ....................................... 2-73

Layer with Learnable Parameters Template ............. 2-74

Name the Layer .................................. 2-75

Declare Properties and Learnable Parameters ........... 2-76

Create Constructor Function ........................ 2-77

Create Forward Functions .......................... 2-79

Create Backward Function .......................... 2-80

Completed Layer ................................. 2-82

GPU Compatibility ................................ 2-83

Check Validity of Layer Using checkLayer ............... 2-84

Include User-Dened Layer in Network ................ 2-85

Dene a Custom Regression Output Layer ............... 2-87

Regression Output Layer Template .................... 2-87

Name the Layer .................................. 2-88

Declare Layer Properties ........................... 2-89

Create Constructor Function ........................ 2-89

Create Forward Loss Function ....................... 2-90

Create Backward Loss Function ...................... 2-92

vii

Completed Layer ................................. 2-92

GPU Compatibility ................................ 2-93

Include Custom Regression Output Layer in Network ...... 2-94

Dene a Custom Classication Output Layer ............. 2-97

Classication Output Layer Template .................. 2-97

Name the Layer .................................. 2-98

Declare Layer Properties ........................... 2-99

Create Constructor Function ....................... 2-100

Create Forward Loss Function ...................... 2-100

Create Backward Loss Function ..................... 2-102

Completed Layer ................................ 2-102

GPU Compatibility ............................... 2-103

Include Custom Classication Output Layer in Network ... 2-104

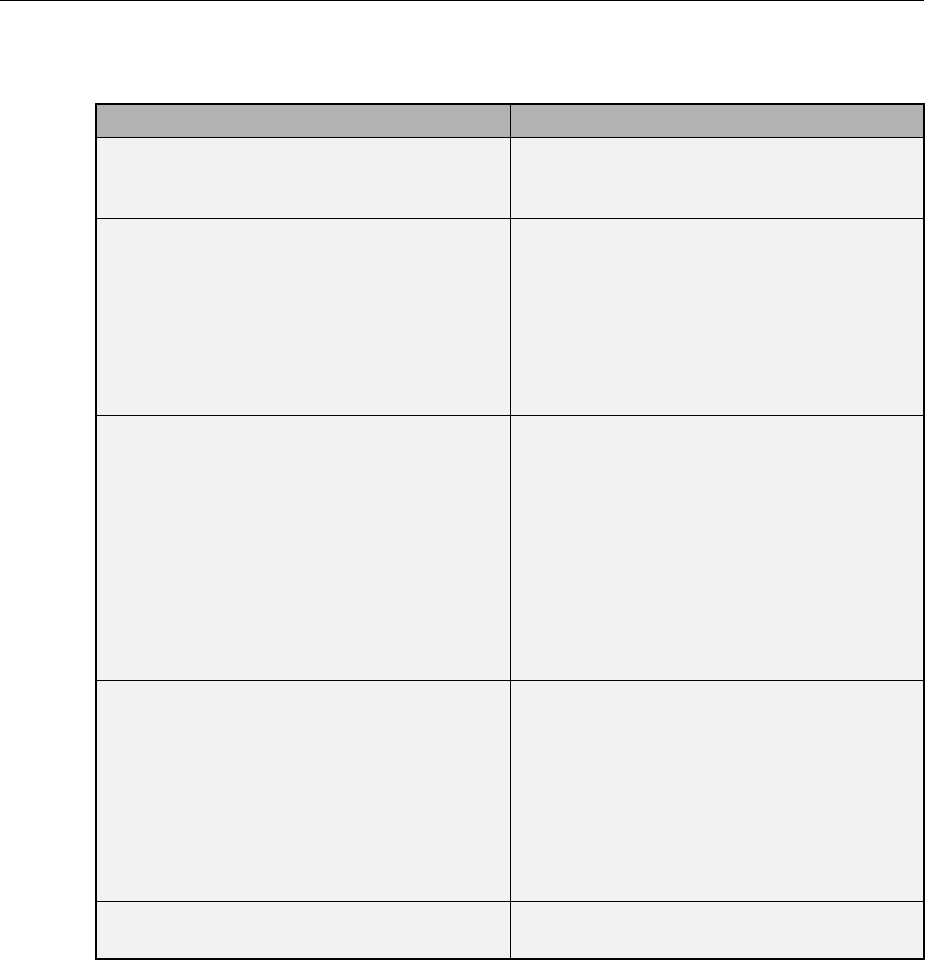

Check Custom Layer Validity ......................... 2-107

List of Tests .................................... 2-107

Diagnostics .................................... 2-109

Check Validity of Layer Using checkLayer .............. 2-114

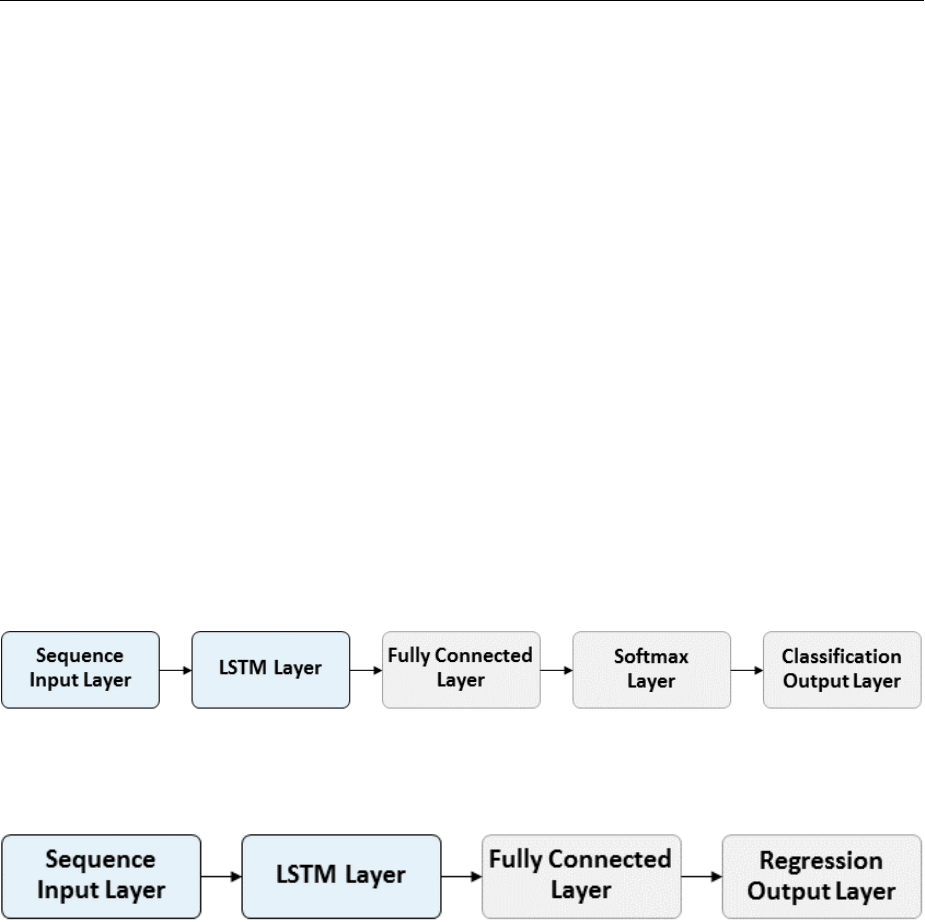

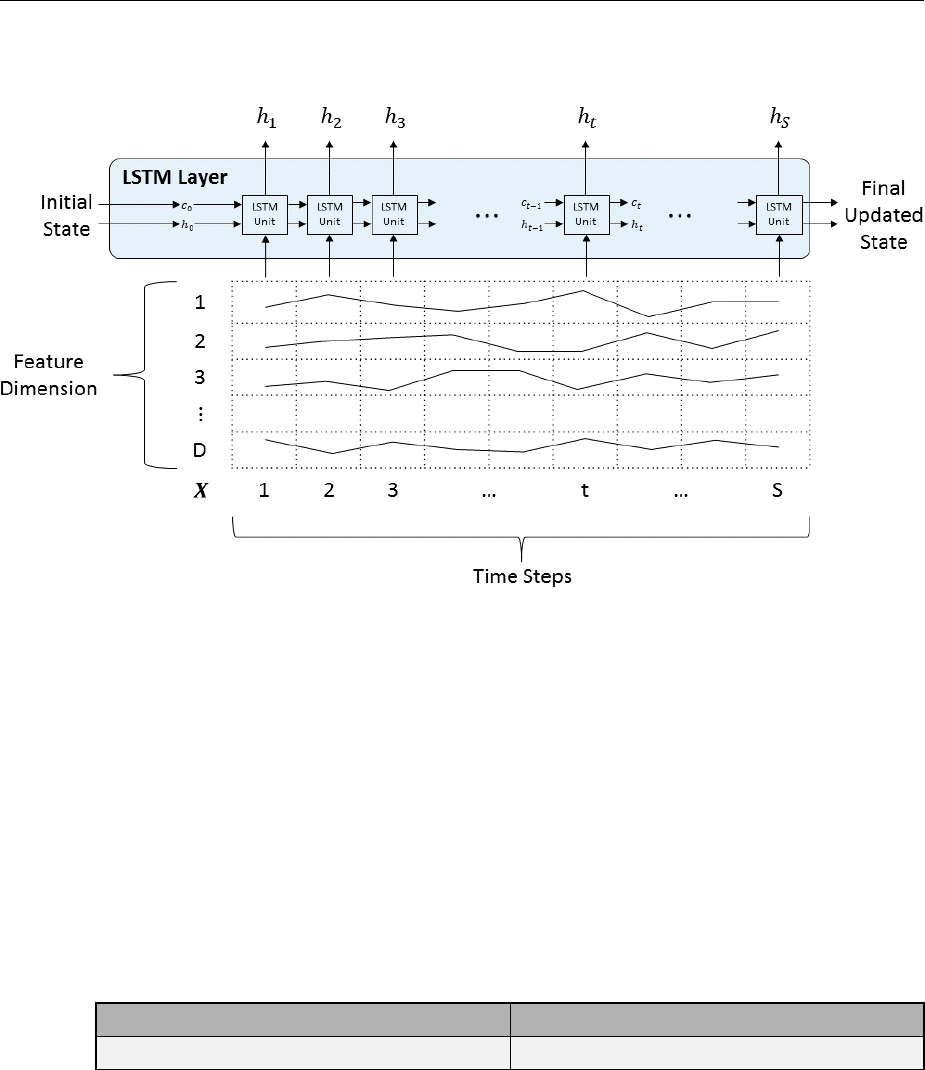

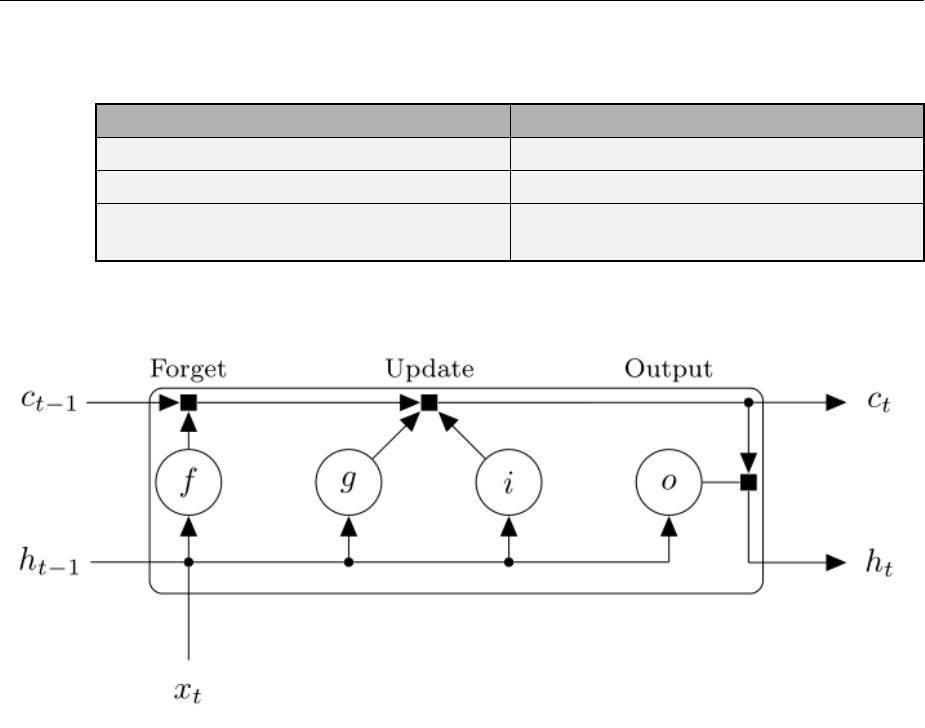

Long Short-Term Memory Networks ................... 2-116

LSTM Network Architecture ........................ 2-116

Layers ........................................ 2-119

Classication and Prediction ........................ 2-120

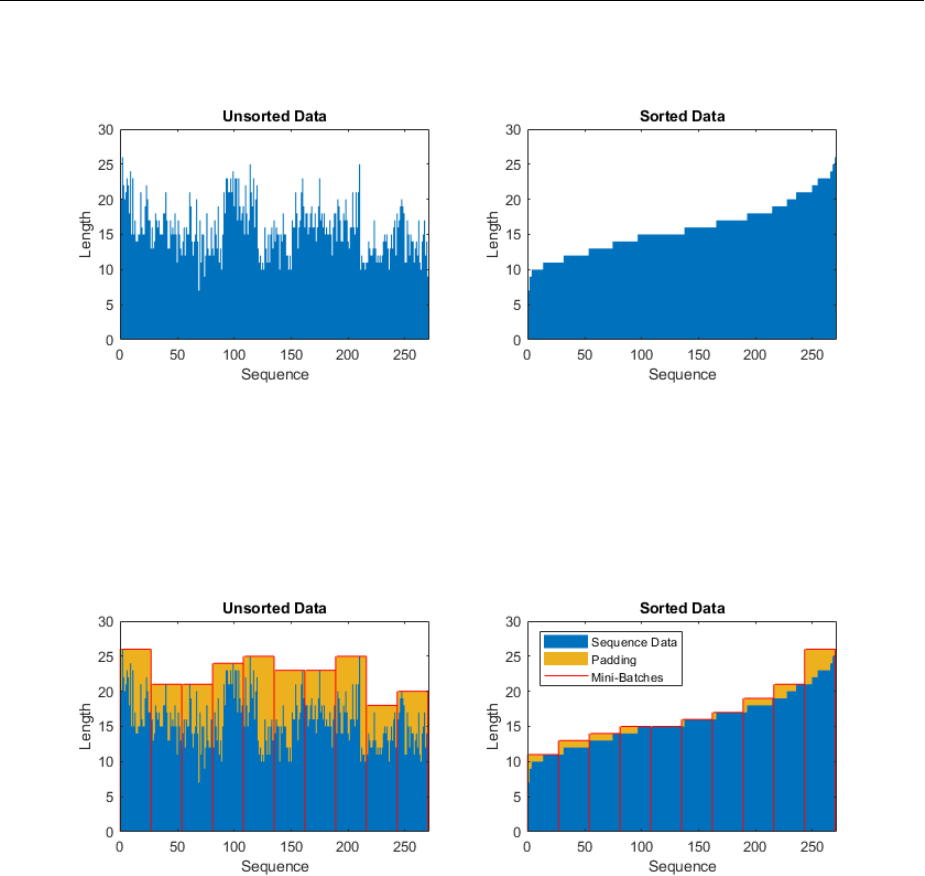

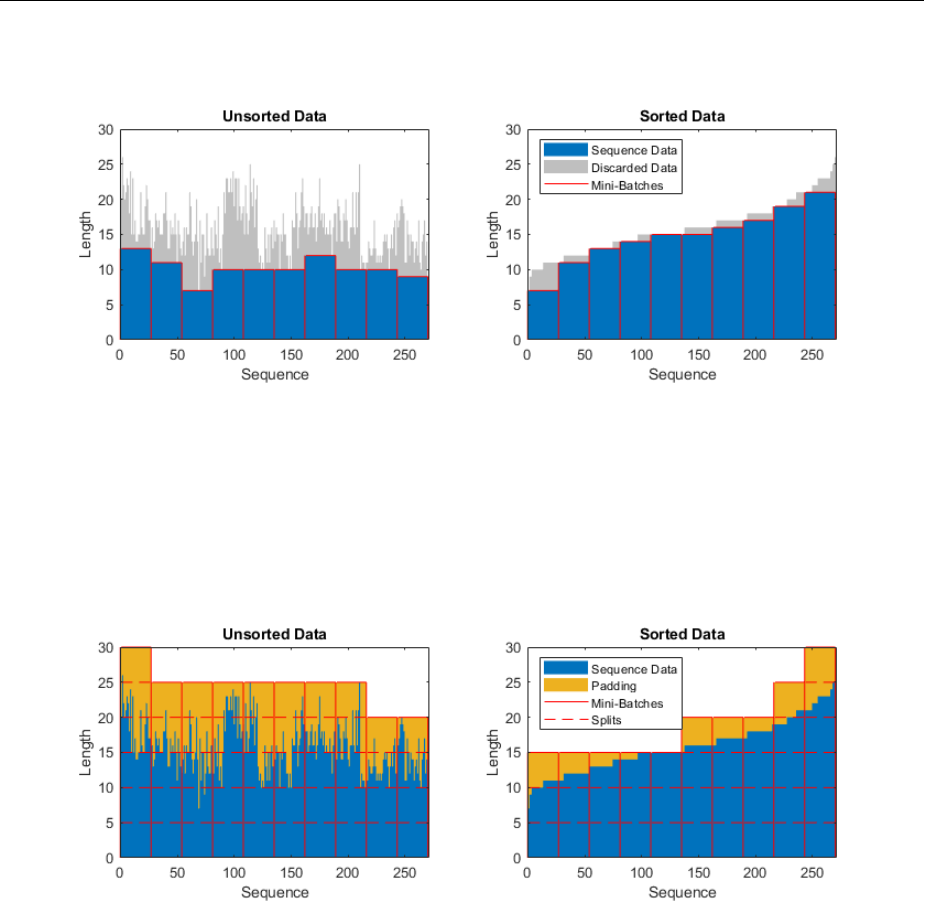

Sequence Padding, Truncation, and Splitting ........... 2-120

LSTM Layer Architecture .......................... 2-122

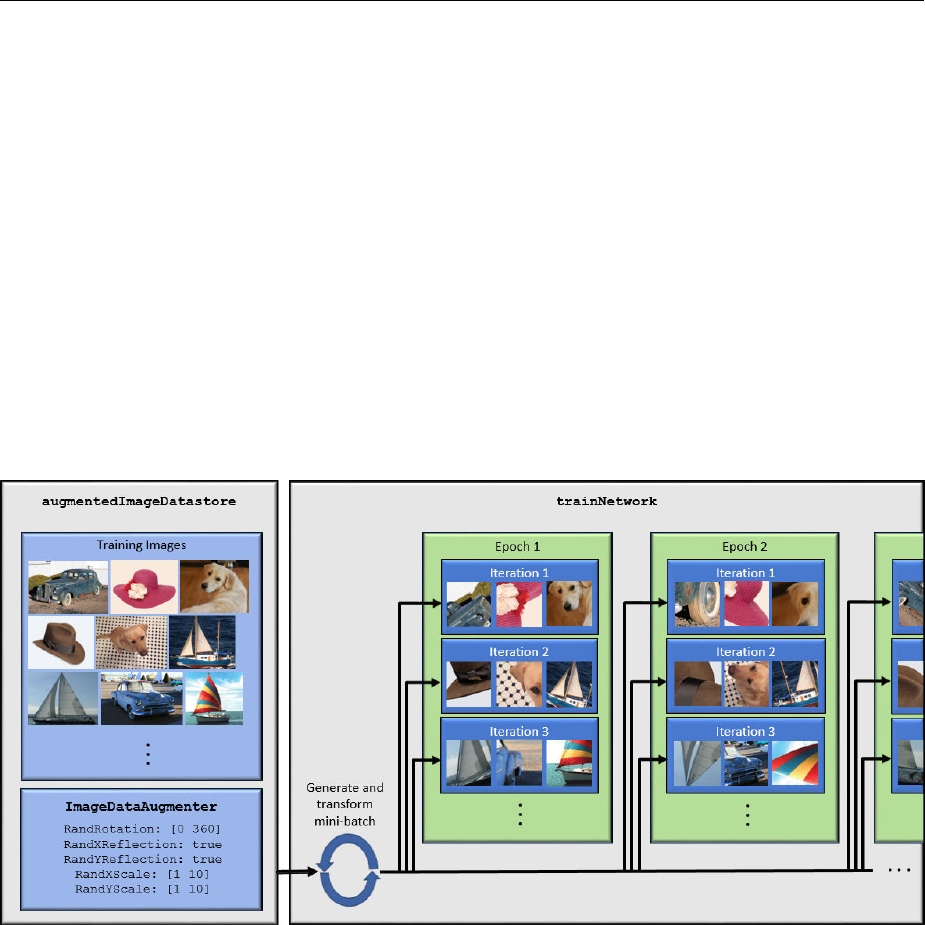

Preprocess Images for Deep Learning .................. 2-127

Resize Images .................................. 2-127

Augment Images for Training ....................... 2-128

Advanced Image Preprocessing ..................... 2-129

Develop Custom Mini-Batch Datastore ................. 2-131

Overview ...................................... 2-131

Implement MiniBatchable Datastore .................. 2-132

Add Support for Shuing .......................... 2-135

Add Support for Parallel and Multi-GPU Training ........ 2-136

Add Support for Background Dispatch ................ 2-137

Validate Custom Mini-Batch Datastore ................ 2-138

viii Contents

Deep Learning in the Cloud

3

Scale Up Deep Learning in Parallel and in the Cloud ........ 3-2

Deep Learning on Multiple GPUs ...................... 3-2

Deep Learning in the Cloud .......................... 3-4

Advanced Support for Fast Multi-Node GPU

Communication ................................. 3-5

Deep Learning with MATLAB on Multiple GPUs ............ 3-7

Select Particular GPUs to Use for Training ............... 3-7

Train Network in the Cloud Using Built-in Parallel Support ... 3-8

Multilayer Neural Networks and Backpropagation

Training

4

Multilayer Neural Networks and Backpropagation

Training .......................................... 4-2

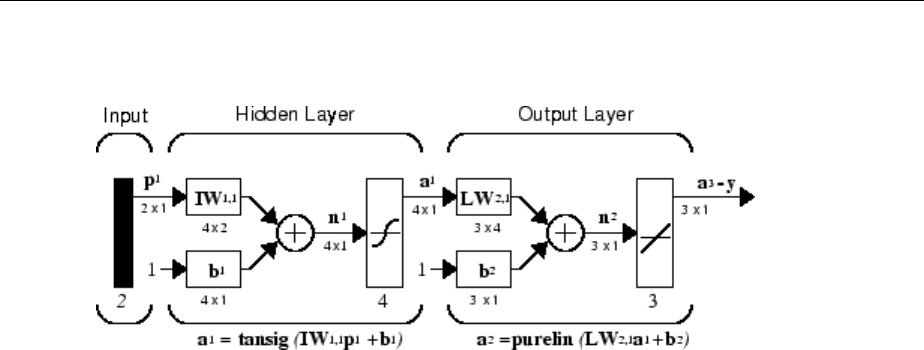

Multilayer Neural Network Architecture .................. 4-4

Neuron Model (logsig, tansig, purelin) .................. 4-4

Feedforward Neural Network ......................... 4-5

Prepare Data for Multilayer Neural Networks ............. 4-8

Choose Neural Network Input-Output Processing

Functions ......................................... 4-9

Representing Unknown or Don't-Care Targets ........... 4-11

Divide Data for Optimal Neural Network Training ......... 4-12

Create, Congure, and Initialize Multilayer

Neural Networks .................................. 4-14

Other Related Architectures ......................... 4-15

Initializing Weights (init) ........................... 4-15

Train and Apply Multilayer Neural Networks ............. 4-17

Training Algorithms ............................... 4-18

ix

Training Example ................................. 4-20

Use the Network ................................. 4-22

Analyze Shallow Neural Network Performance After

Training ......................................... 4-24

Improving Results ................................ 4-29

Limitations and Cautions ............................. 4-30

Dynamic Neural Networks

5

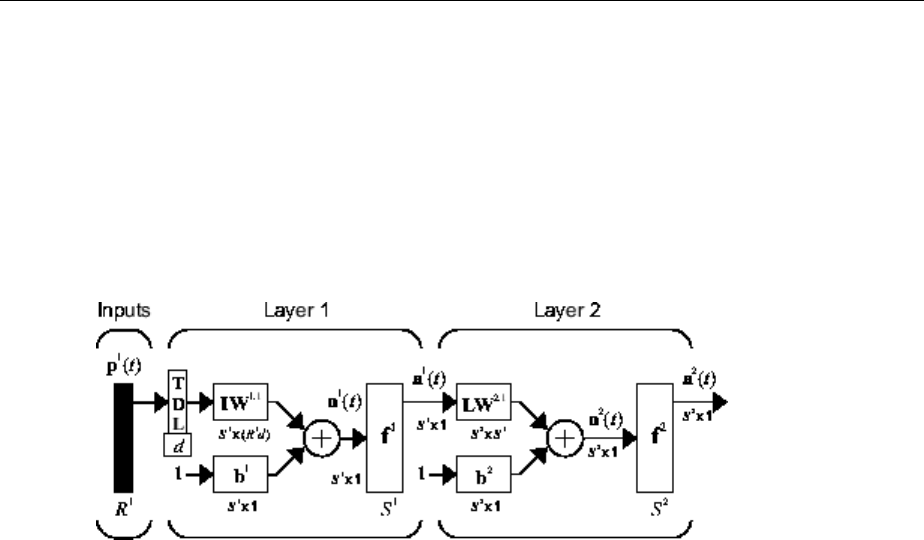

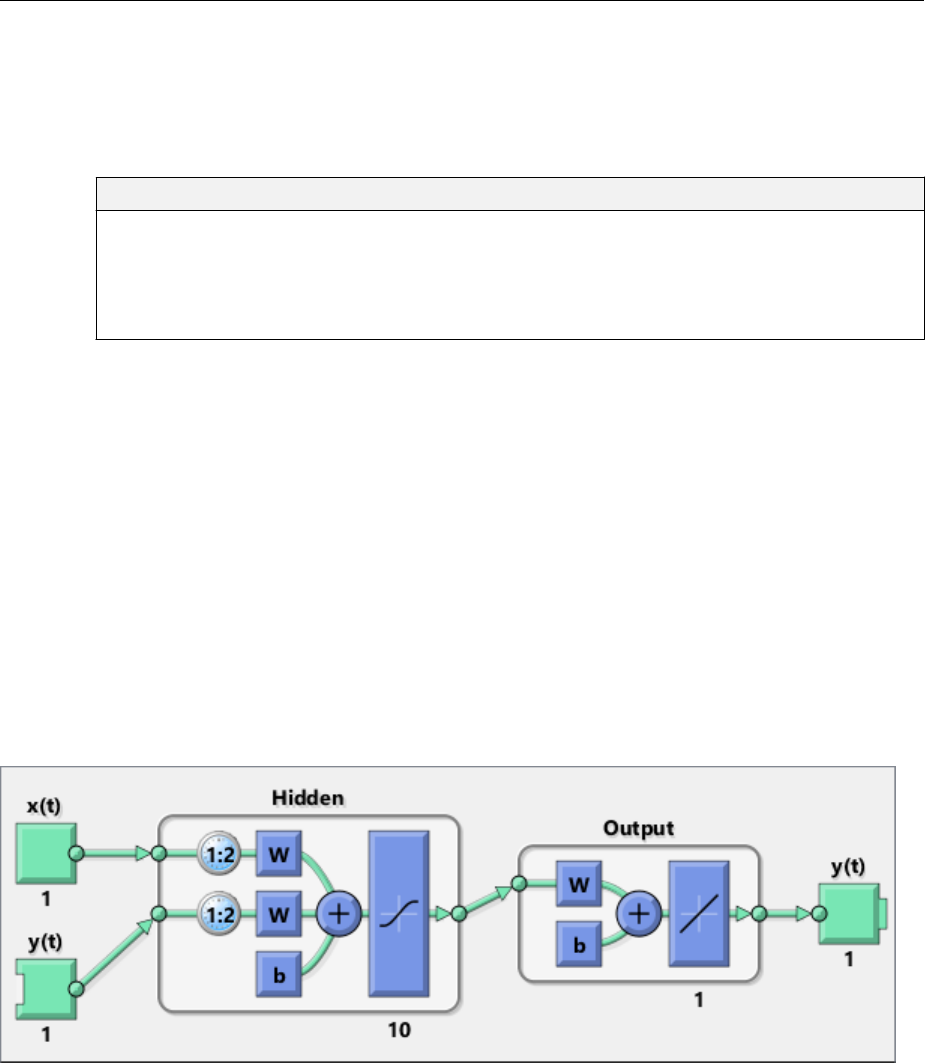

Introduction to Dynamic Neural Networks ................ 5-2

How Dynamic Neural Networks Work .................... 5-3

Feedforward and Recurrent Neural Networks ............. 5-3

Applications of Dynamic Networks .................... 5-10

Dynamic Network Structures ........................ 5-10

Dynamic Network Training .......................... 5-11

Design Time Series Time-Delay Neural Networks ......... 5-13

Prepare Input and Layer Delay States .................. 5-17

Design Time Series Distributed Delay Neural Networks .... 5-19

Design Time Series NARX Feedback Neural Networks ..... 5-22

Multiple External Variables .......................... 5-29

Design Layer-Recurrent Neural Networks ............... 5-30

Create Reference Model Controller with MATLAB Script ... 5-33

Multiple Sequences with Dynamic Neural Networks ....... 5-40

Neural Network Time-Series Utilities ................... 5-41

Train Neural Networks with Error Weights ............... 5-43

Normalize Errors of Multiple Outputs ................... 5-46

xContents

Multistep Neural Network Prediction ................... 5-51

Set Up in Open-Loop Mode .......................... 5-51

Multistep Closed-Loop Prediction From Initial Conditions ... 5-52

Multistep Closed-Loop Prediction Following Known

Sequence ..................................... 5-52

Following Closed-Loop Simulation with Open-Loop

Simulation .................................... 5-53

Control Systems

6

Introduction to Neural Network Control Systems .......... 6-2

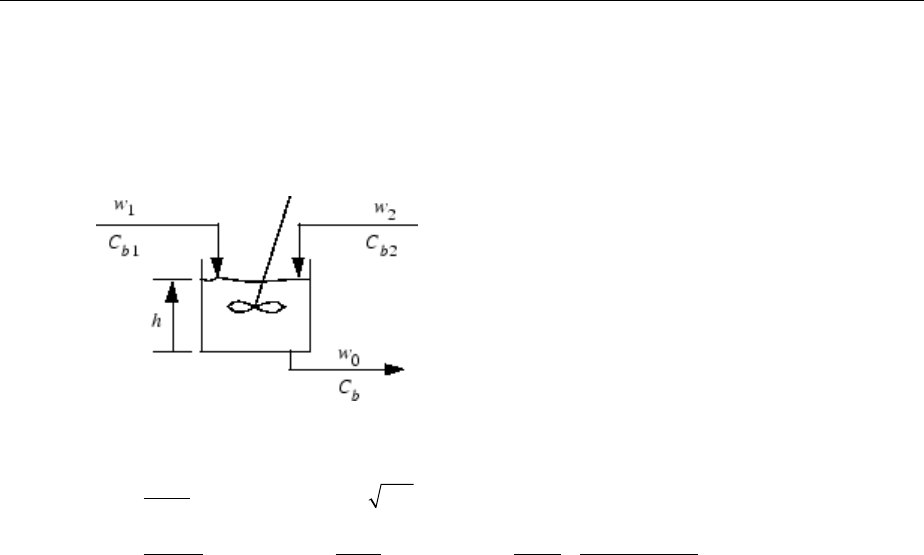

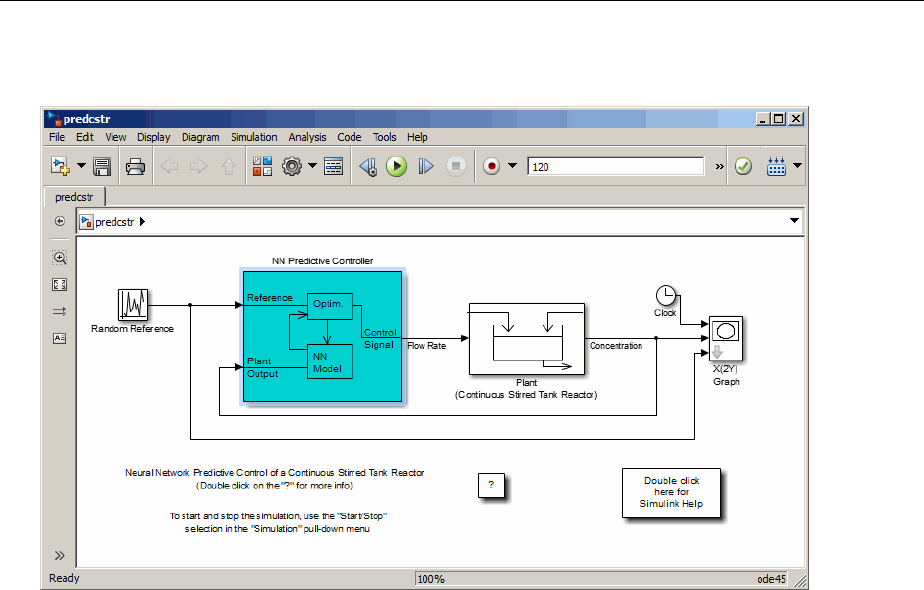

Design Neural Network Predictive Controller in Simulink ... 6-4

System Identication ............................... 6-4

Predictive Control .................................. 6-5

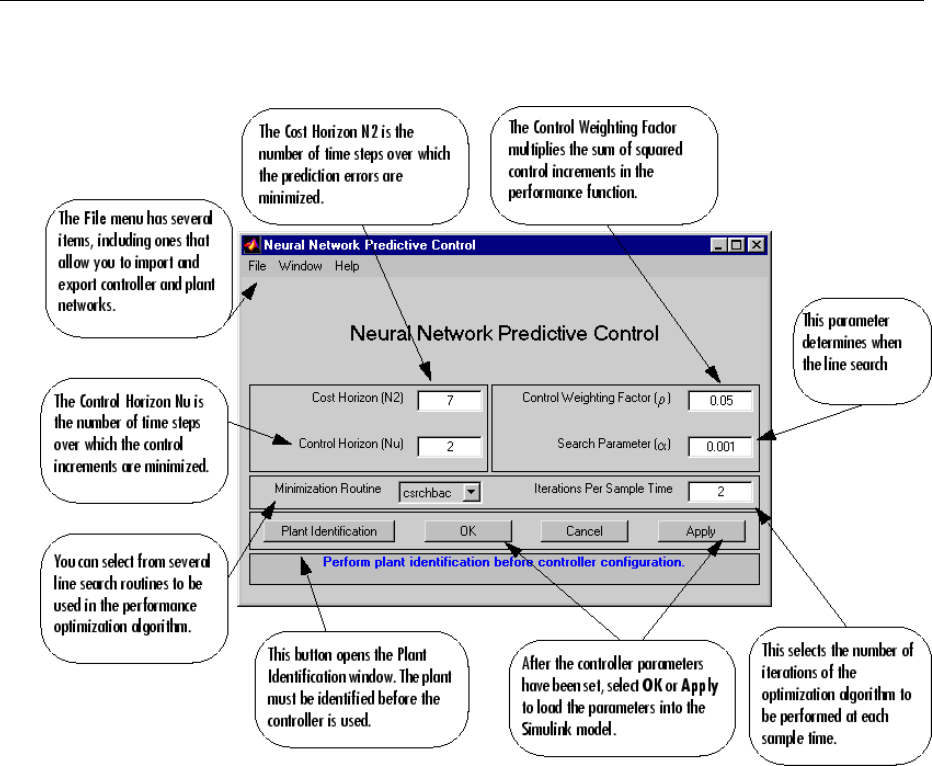

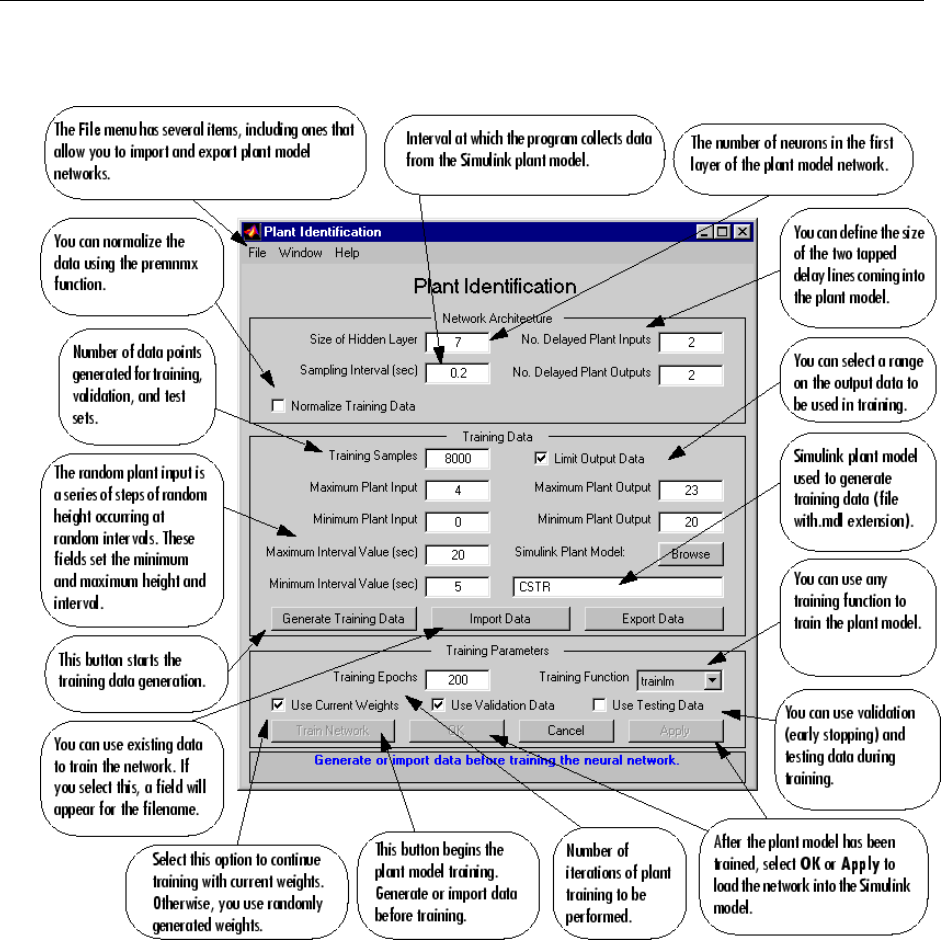

Use the Neural Network Predictive Controller Block ........ 6-6

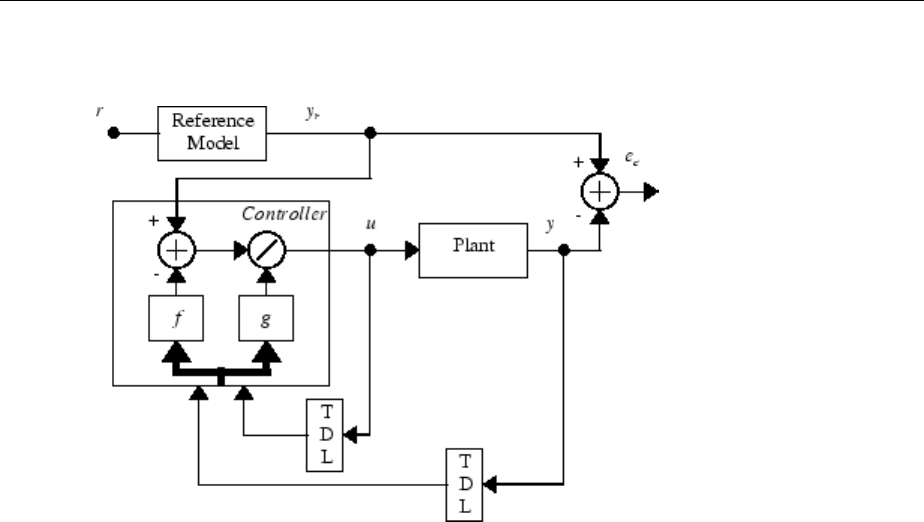

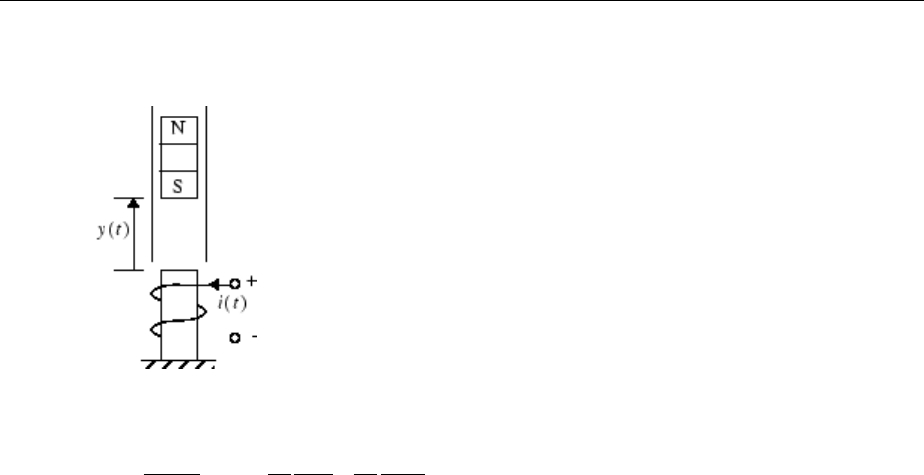

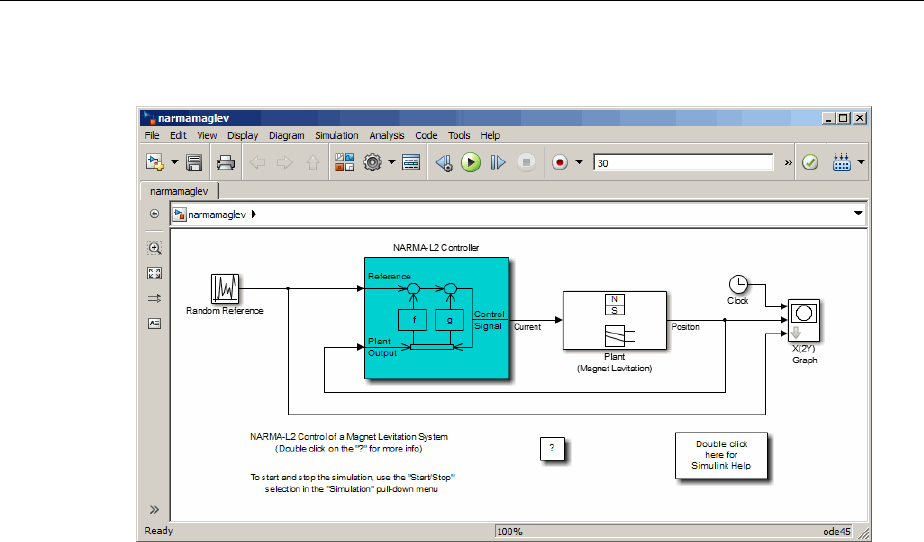

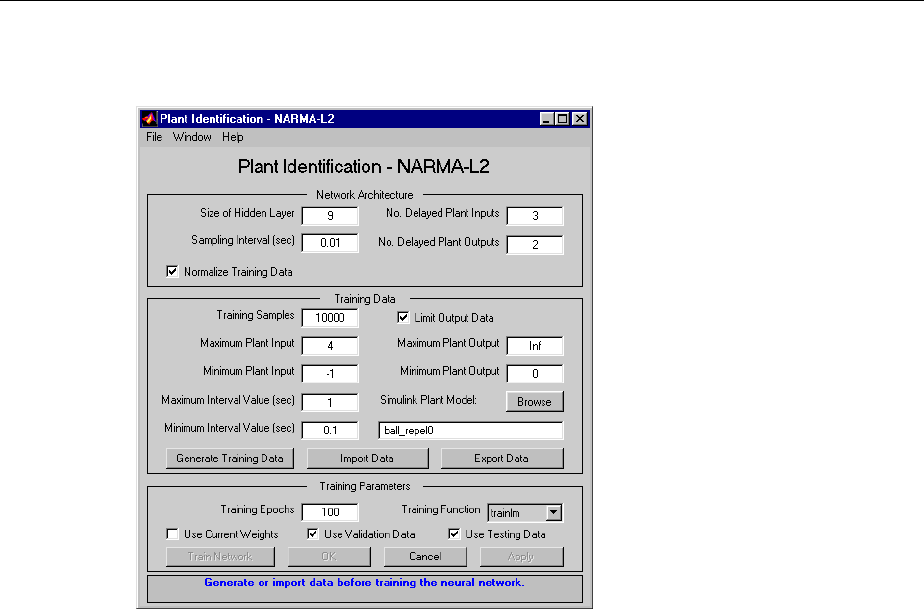

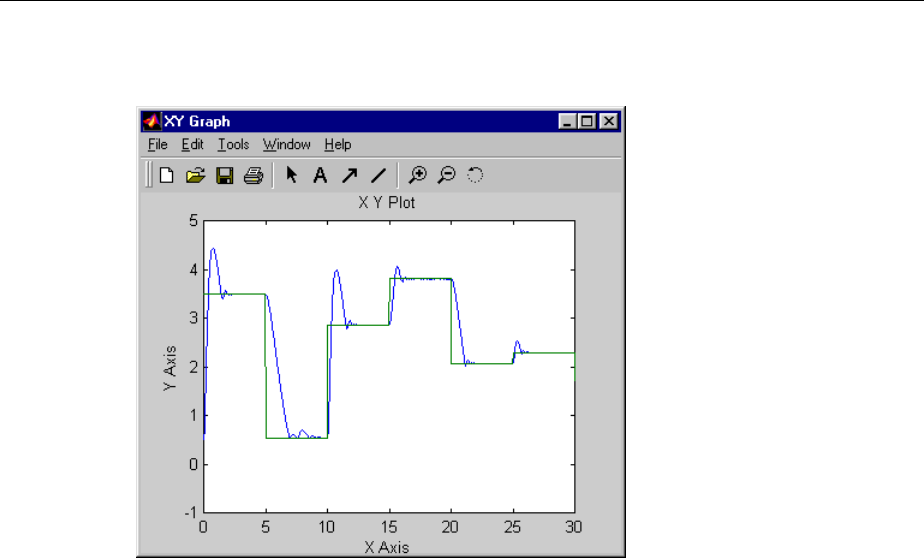

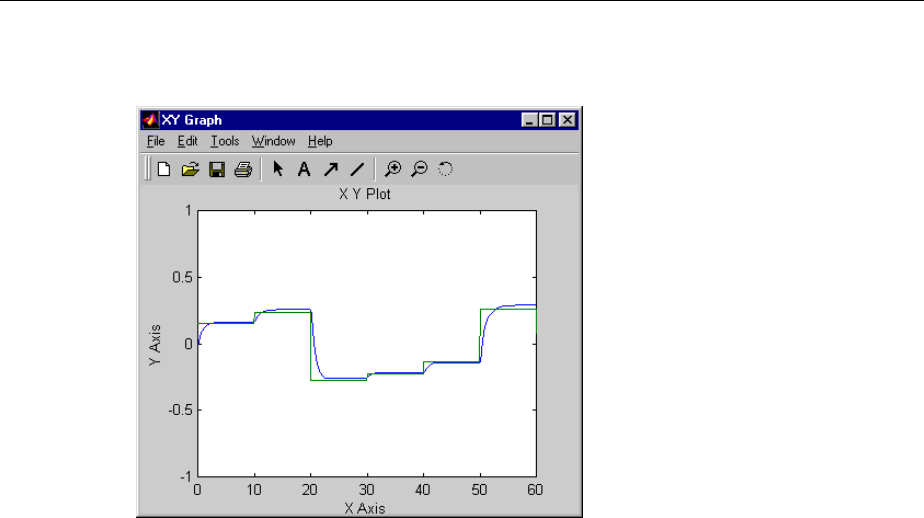

Design NARMA-L2 Neural Controller in Simulink ......... 6-14

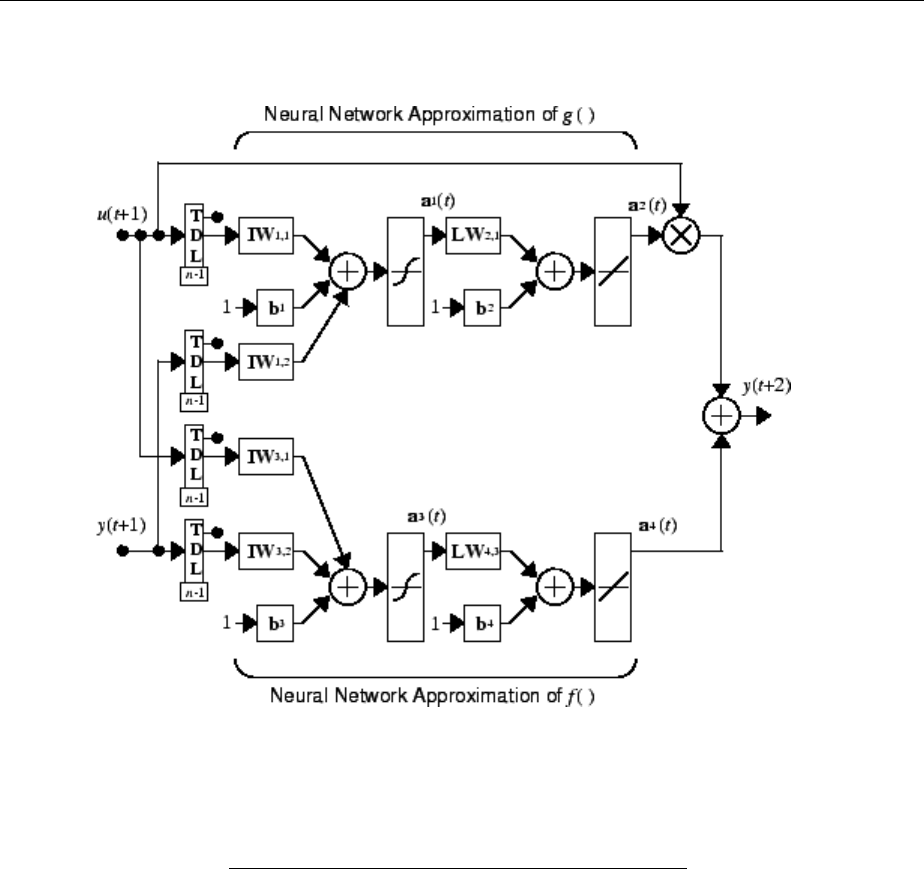

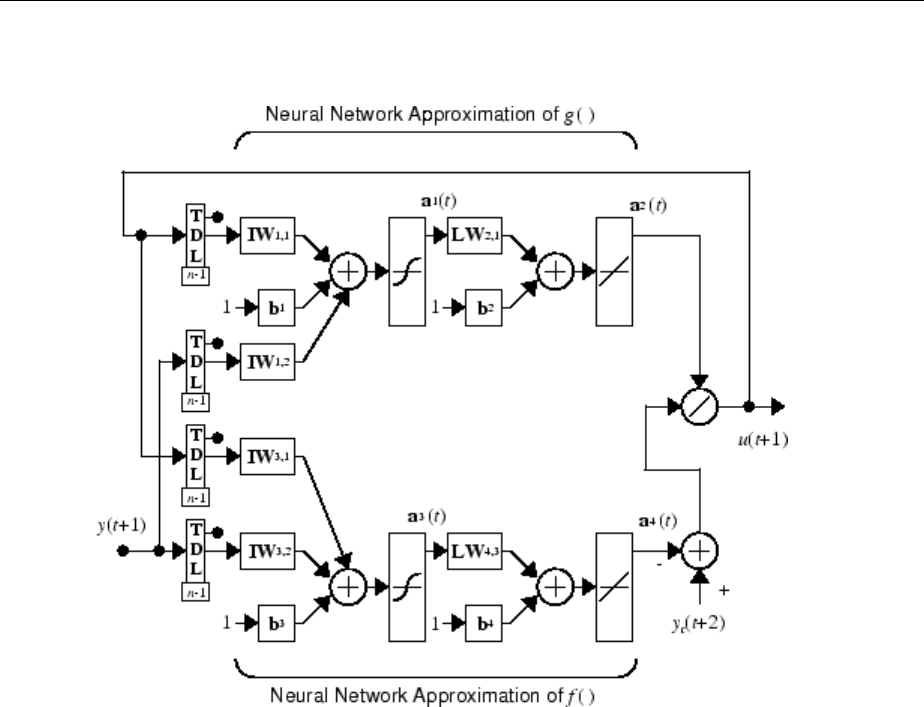

Identication of the NARMA-L2 Model ................. 6-14

NARMA-L2 Controller .............................. 6-16

Use the NARMA-L2 Controller Block .................. 6-18

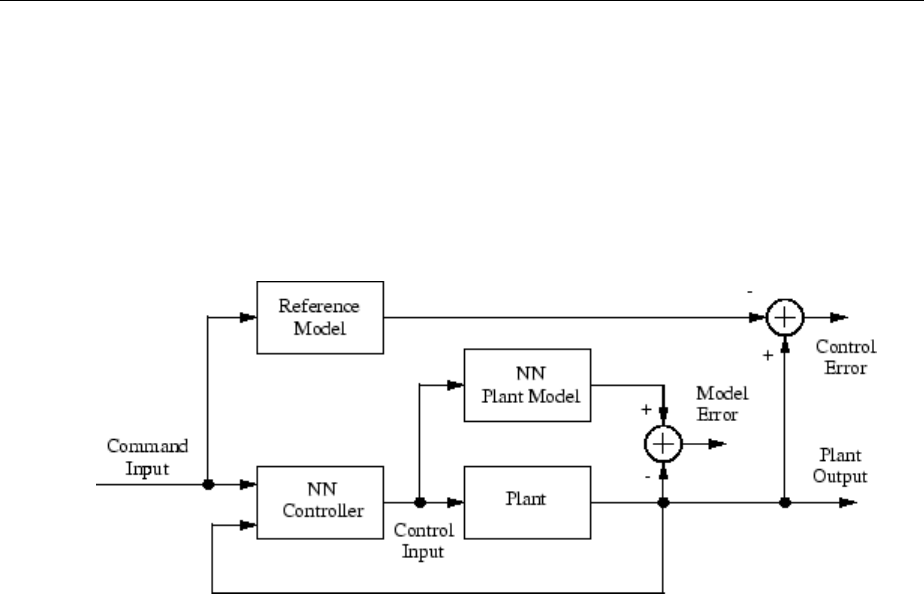

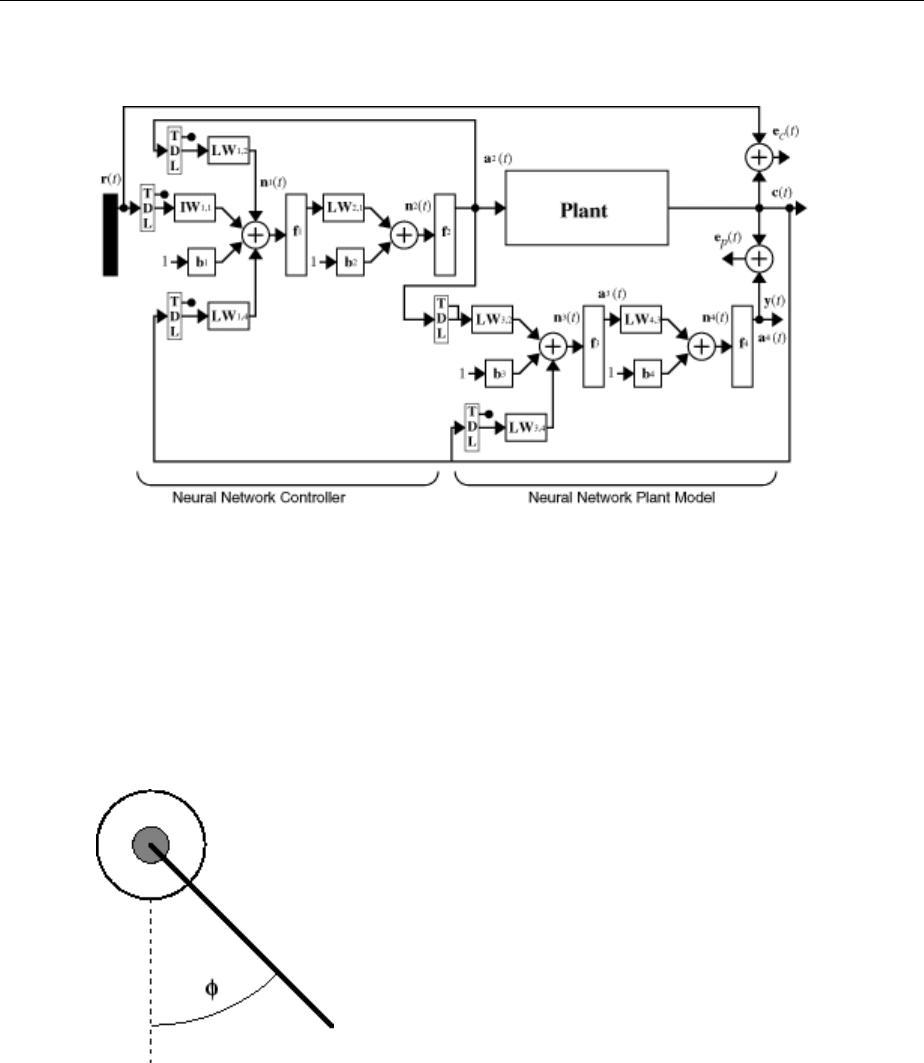

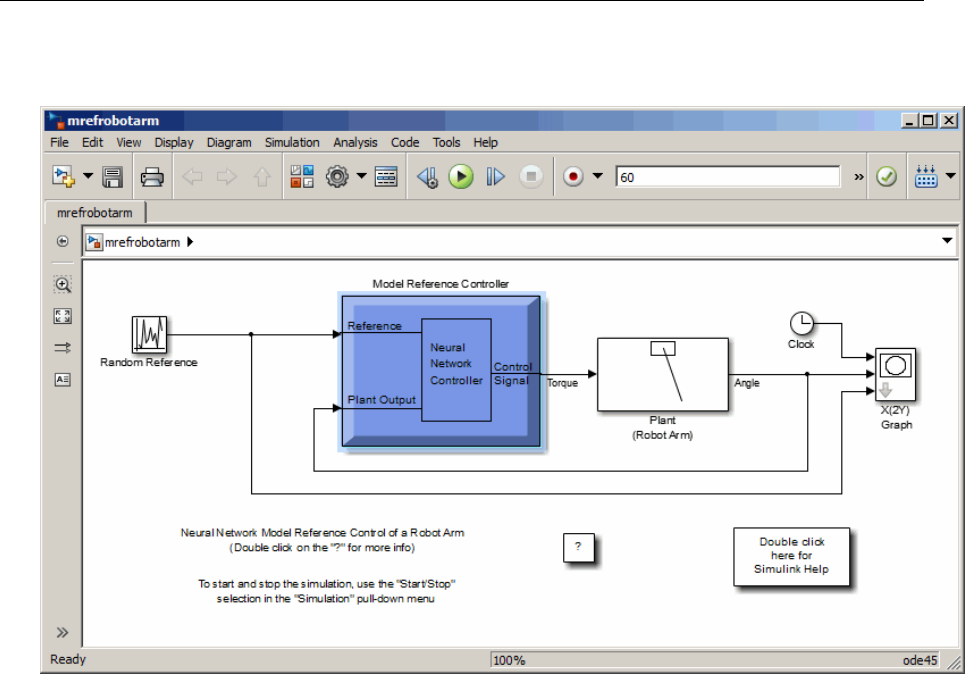

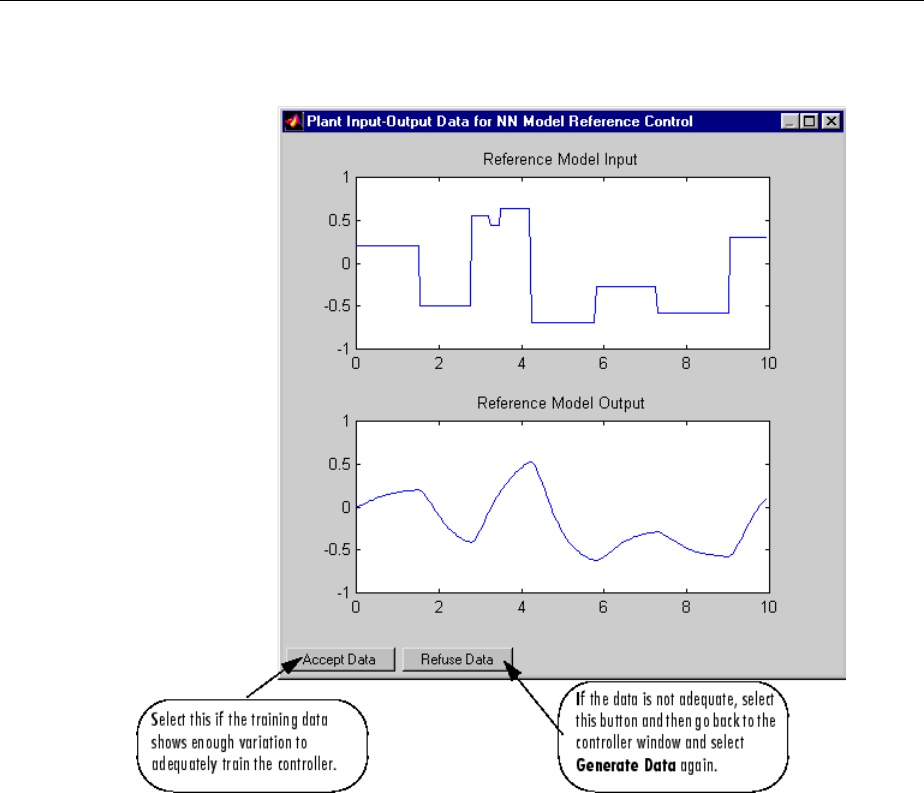

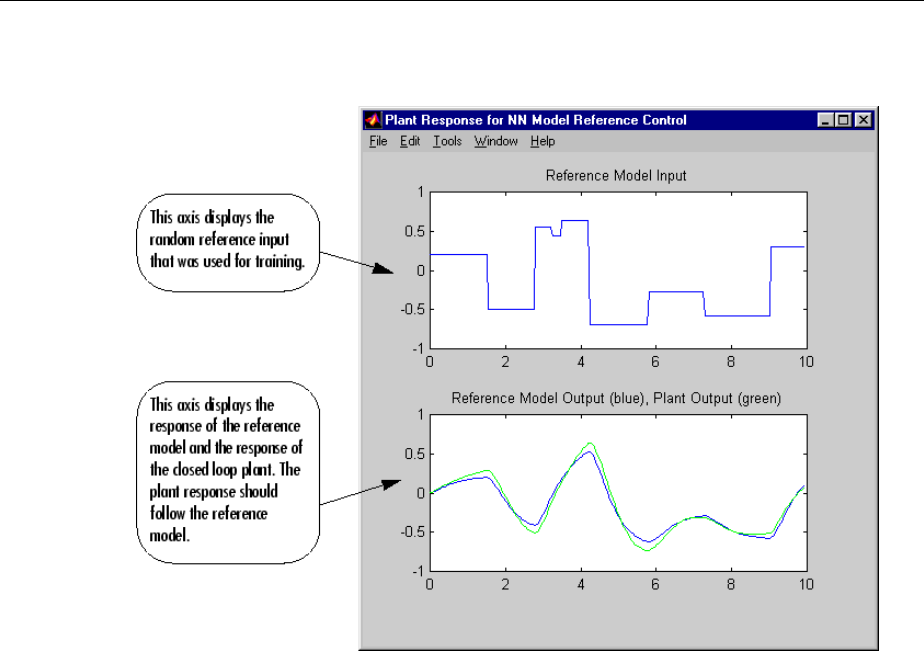

Design Model-Reference Neural Controller in Simulink .... 6-23

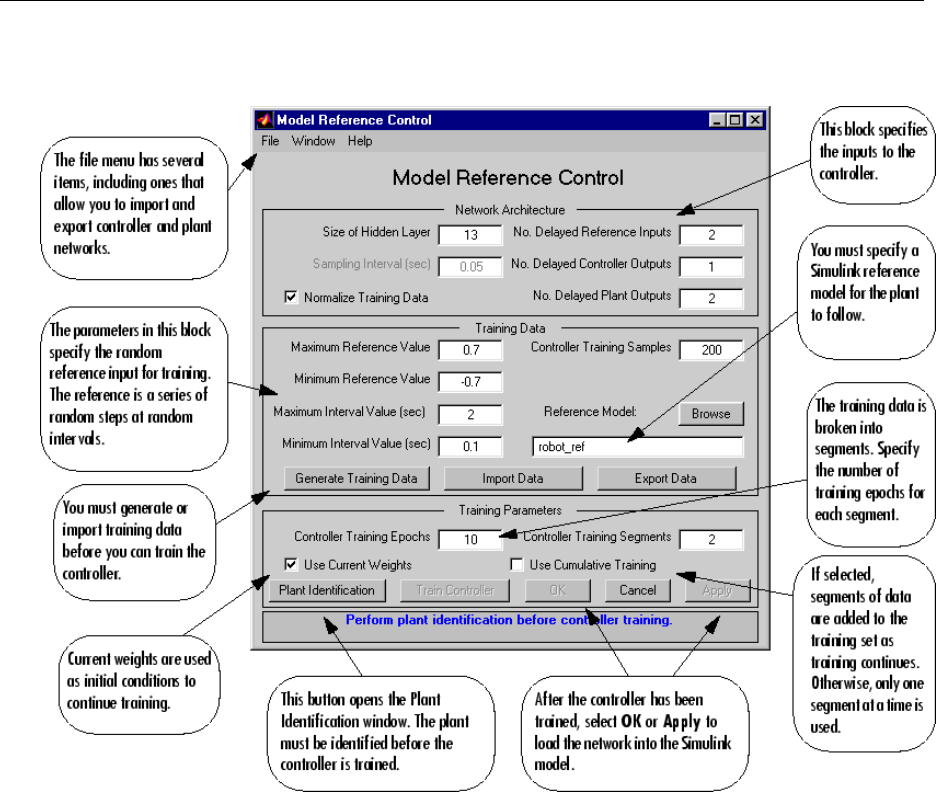

Use the Model Reference Controller Block .............. 6-24

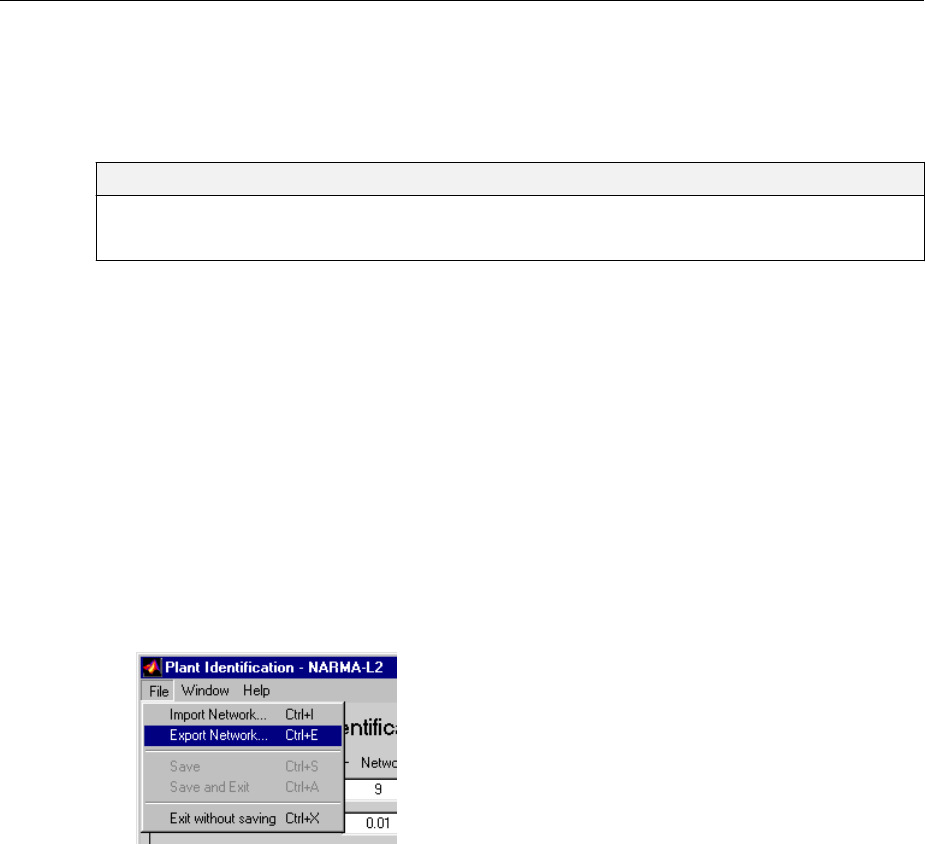

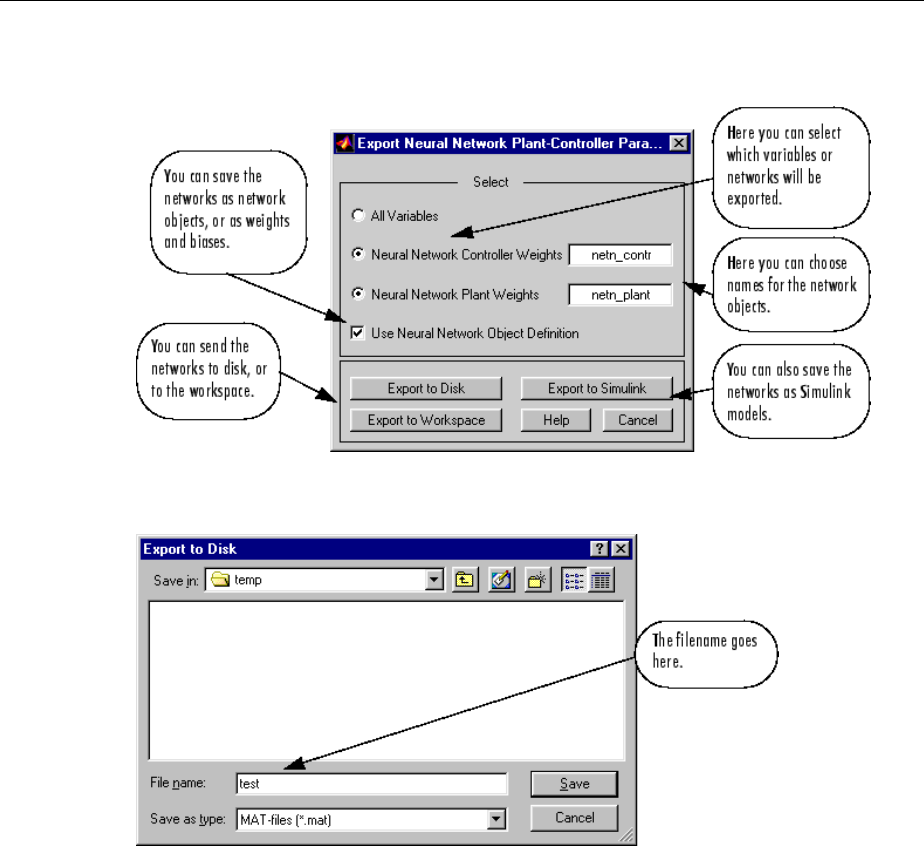

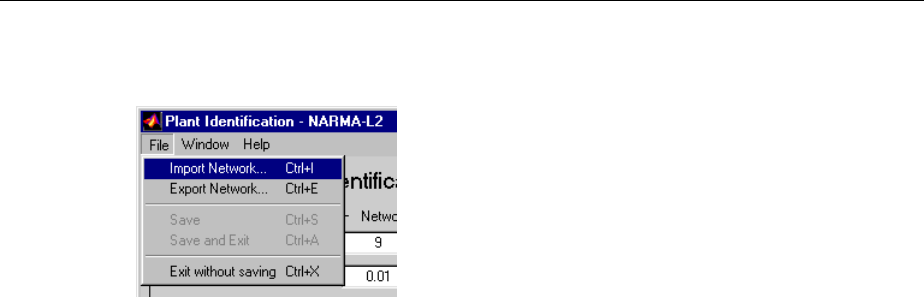

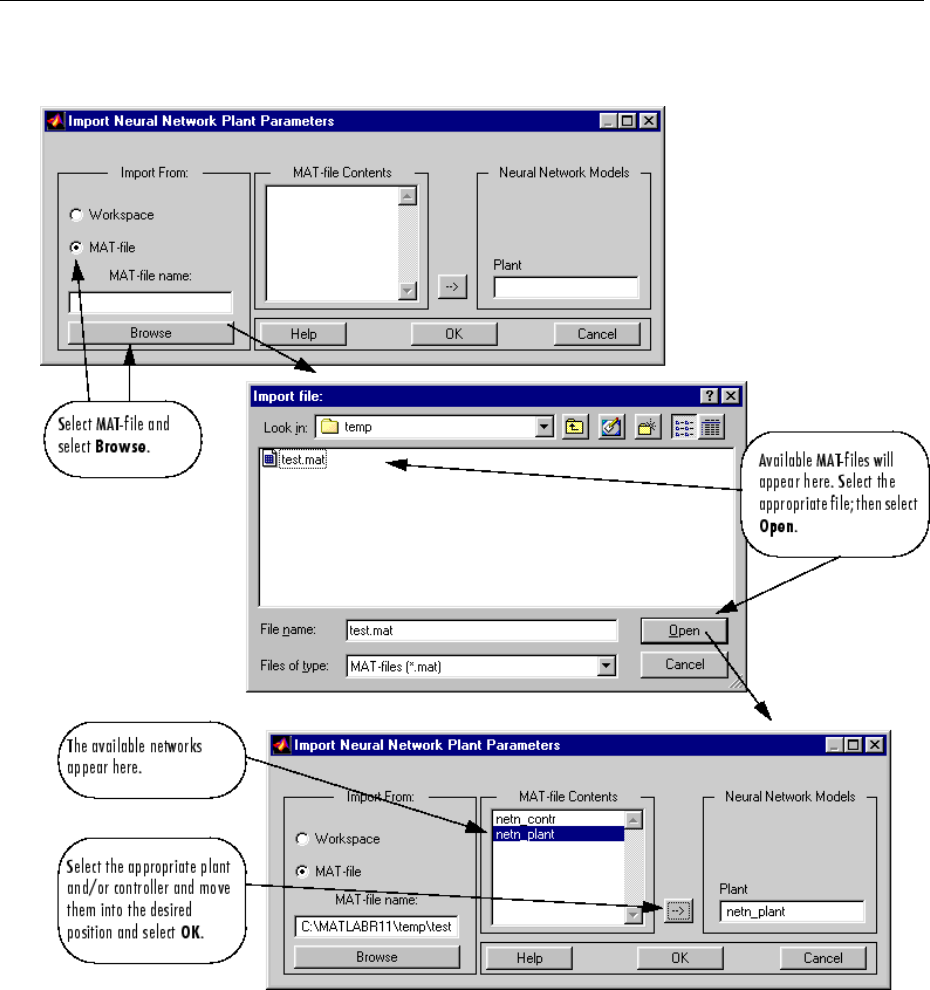

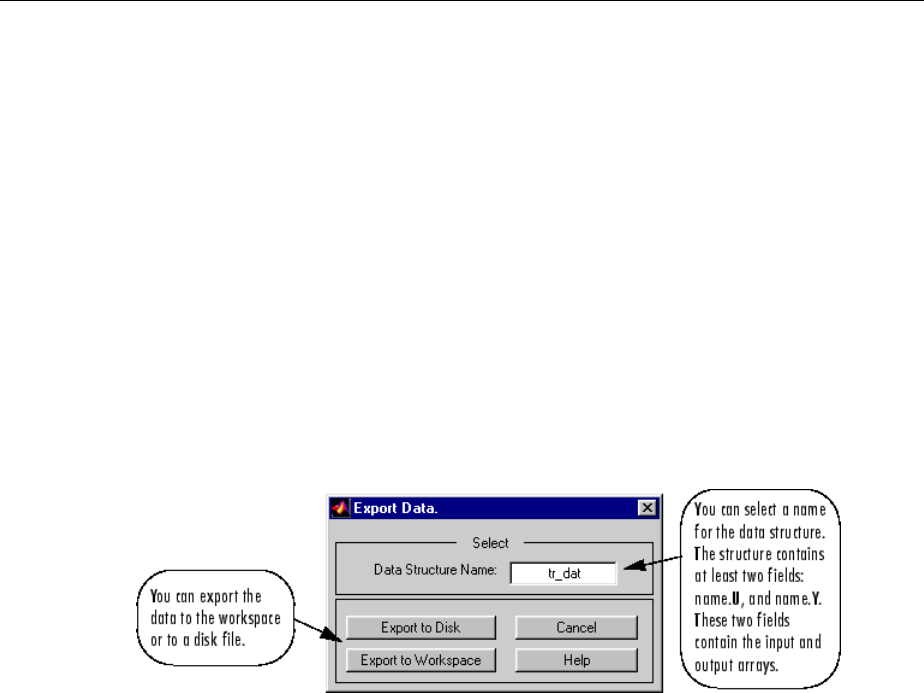

Import-Export Neural Network Simulink Control Systems .. 6-31

Import and Export Networks ........................ 6-31

Import and Export Training Data ..................... 6-35

Radial Basis Neural Networks

7

Introduction to Radial Basis Neural Networks ............. 7-2

Important Radial Basis Functions ...................... 7-2

xi

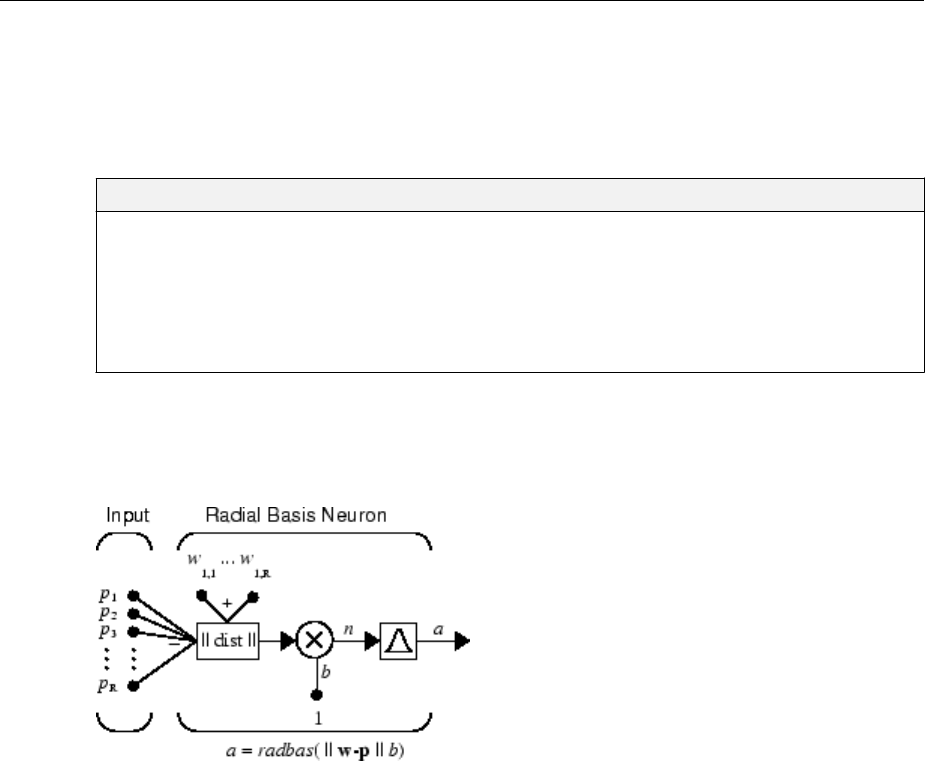

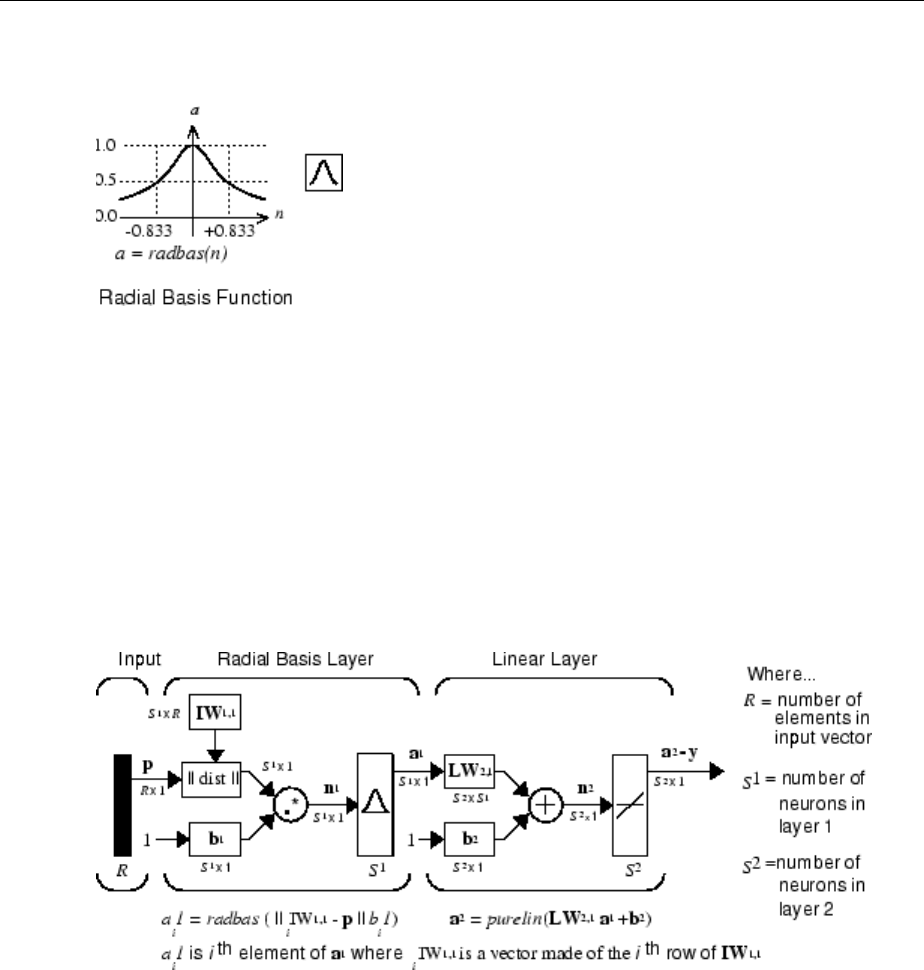

Radial Basis Neural Networks .......................... 7-3

Neuron Model .................................... 7-3

Network Architecture ............................... 7-4

Exact Design (newrbe) .............................. 7-6

More Eicient Design (newrb) ........................ 7-7

Examples ........................................ 7-8

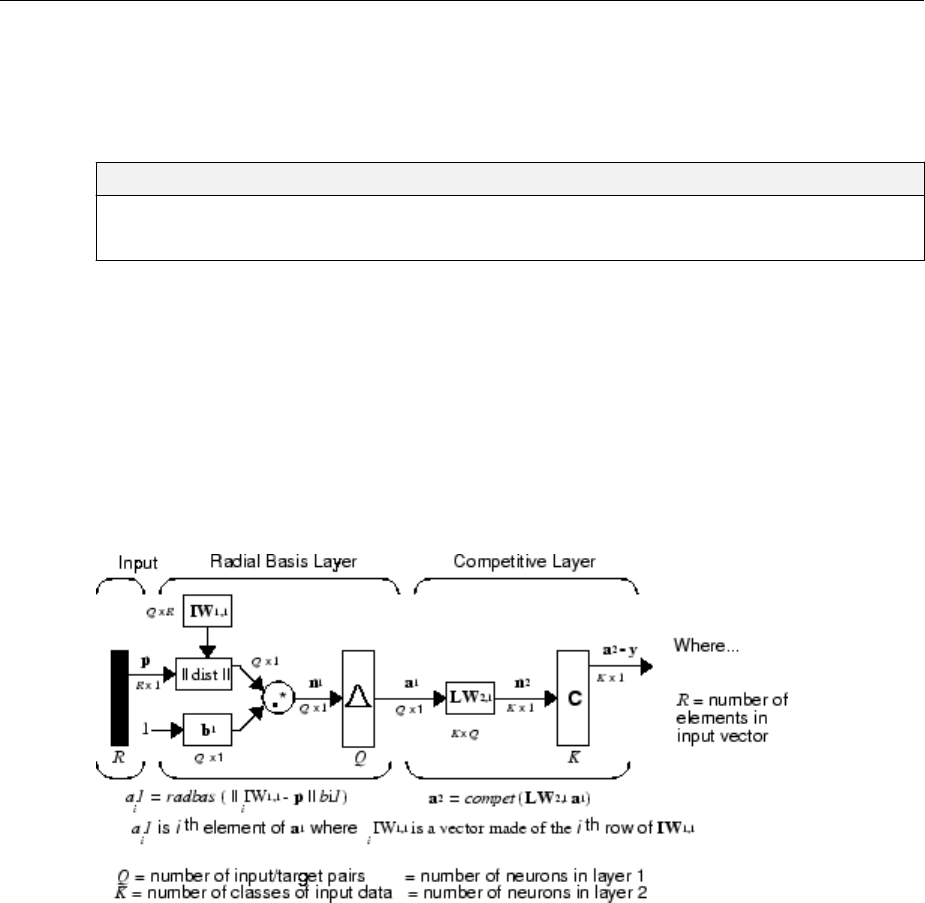

Probabilistic Neural Networks .......................... 7-9

Network Architecture ............................... 7-9

Design (newpnn) ................................. 7-10

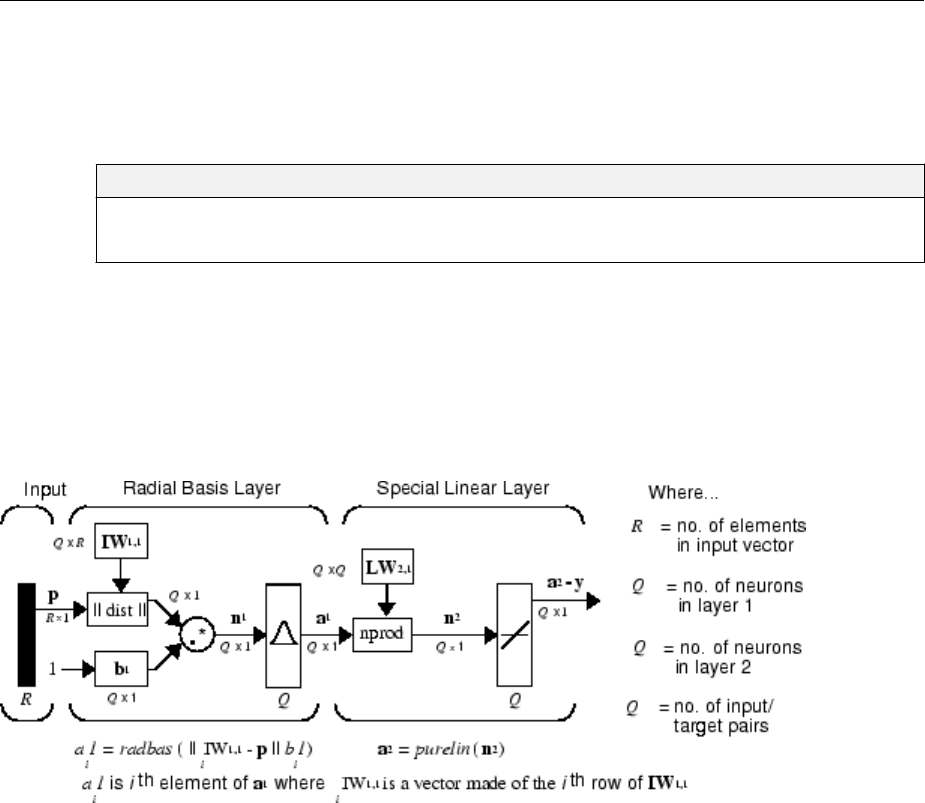

Generalized Regression Neural Networks ................ 7-12

Network Architecture .............................. 7-12

Design (newgrnn) ................................. 7-14

Self-Organizing and Learning Vector Quantization

Networks

8

Introduction to Self-Organizing and LVQ ................. 8-2

Important Self-Organizing and LVQ Functions ............. 8-2

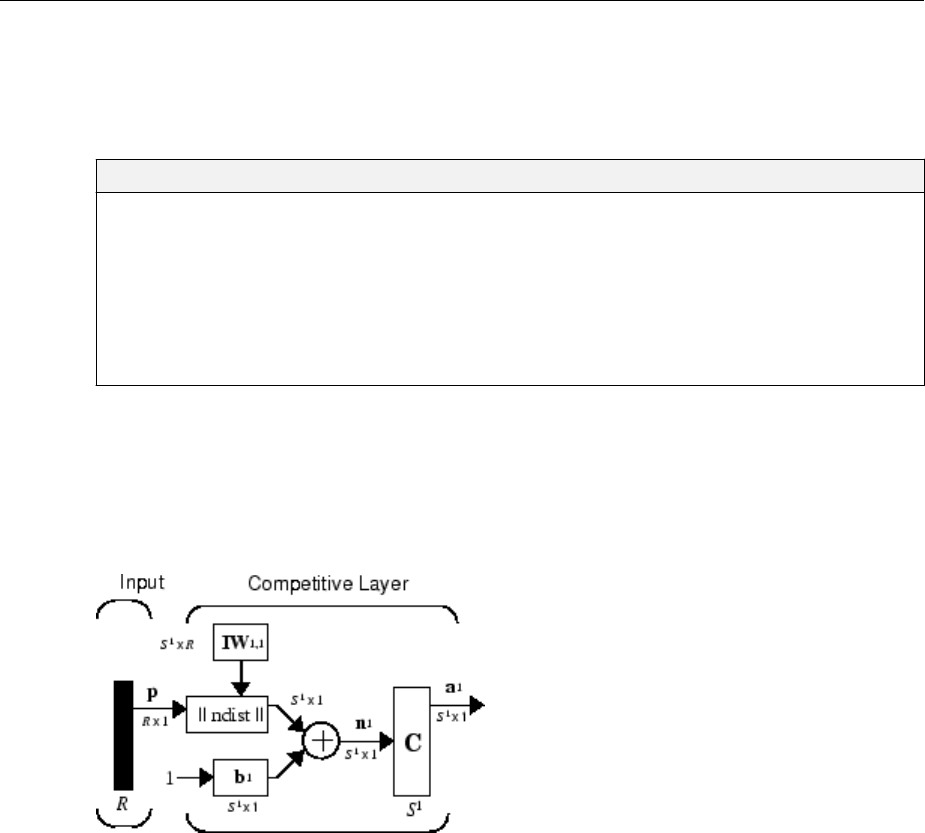

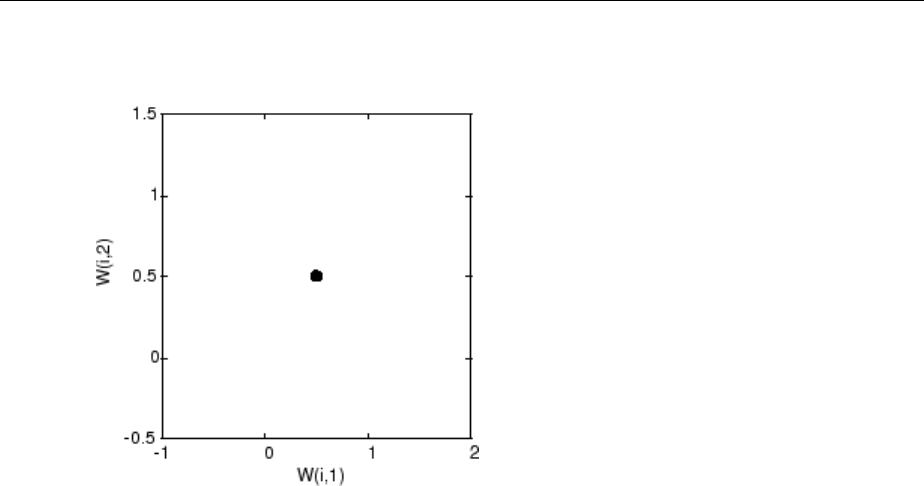

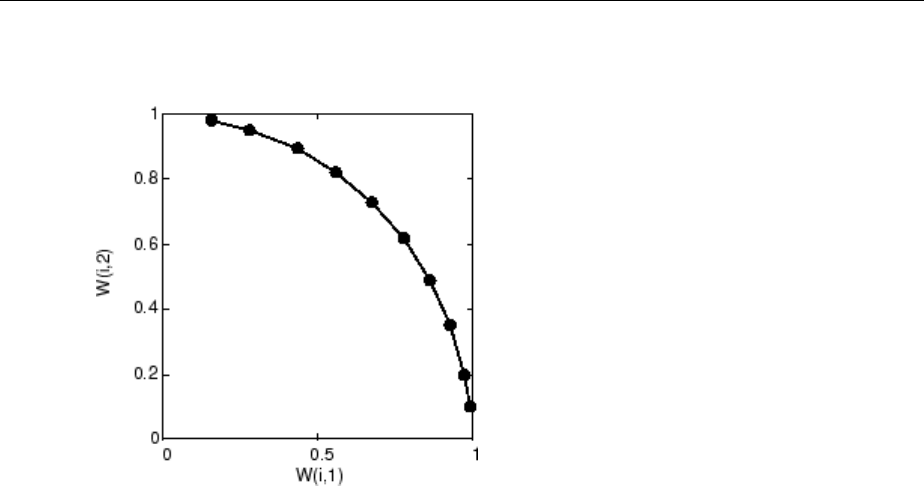

Cluster with a Competitive Neural Network ............... 8-3

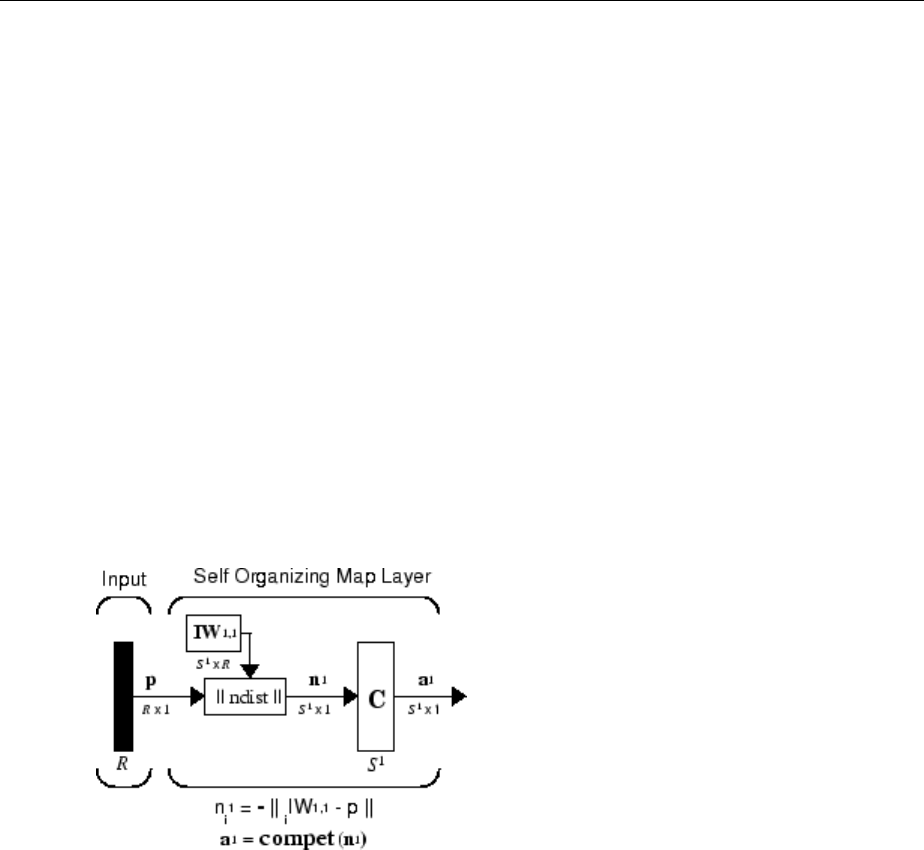

Architecture ...................................... 8-3

Create a Competitive Neural Network .................. 8-4

Kohonen Learning Rule (learnk) ....................... 8-5

Bias Learning Rule (learncon) ......................... 8-5

Training ......................................... 8-6

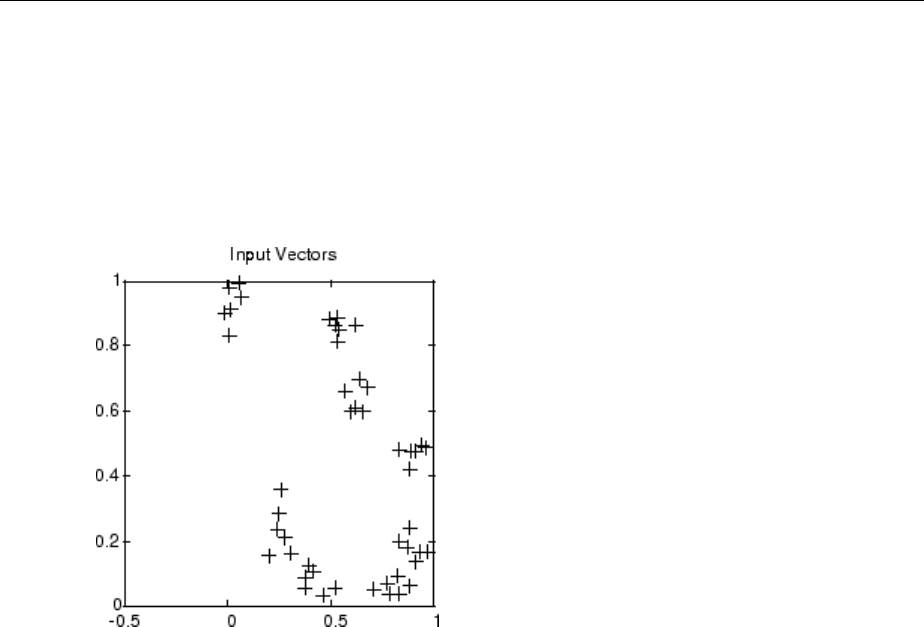

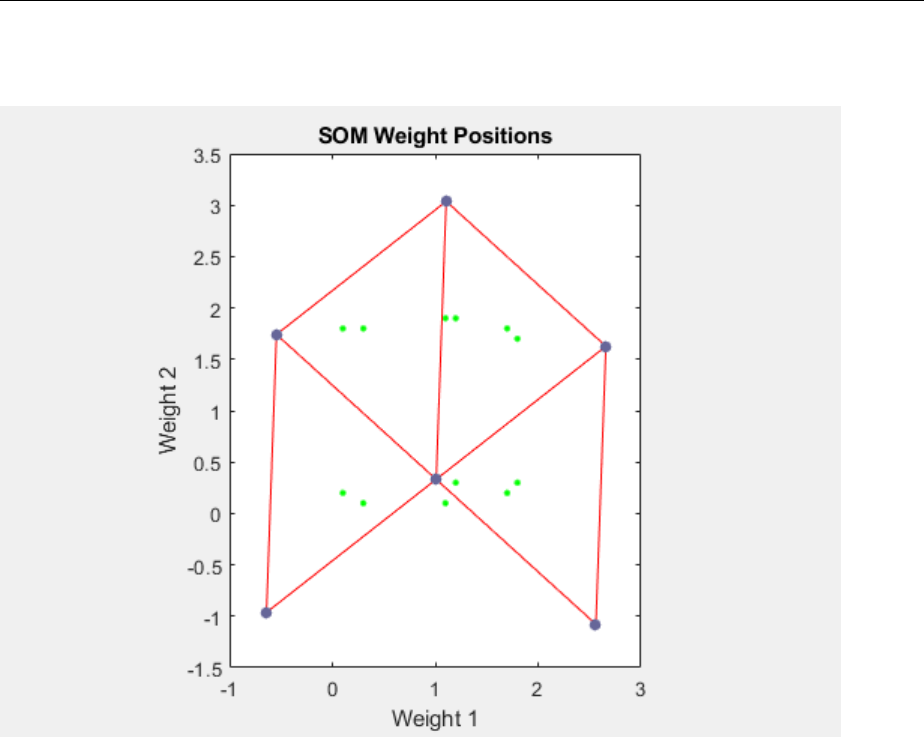

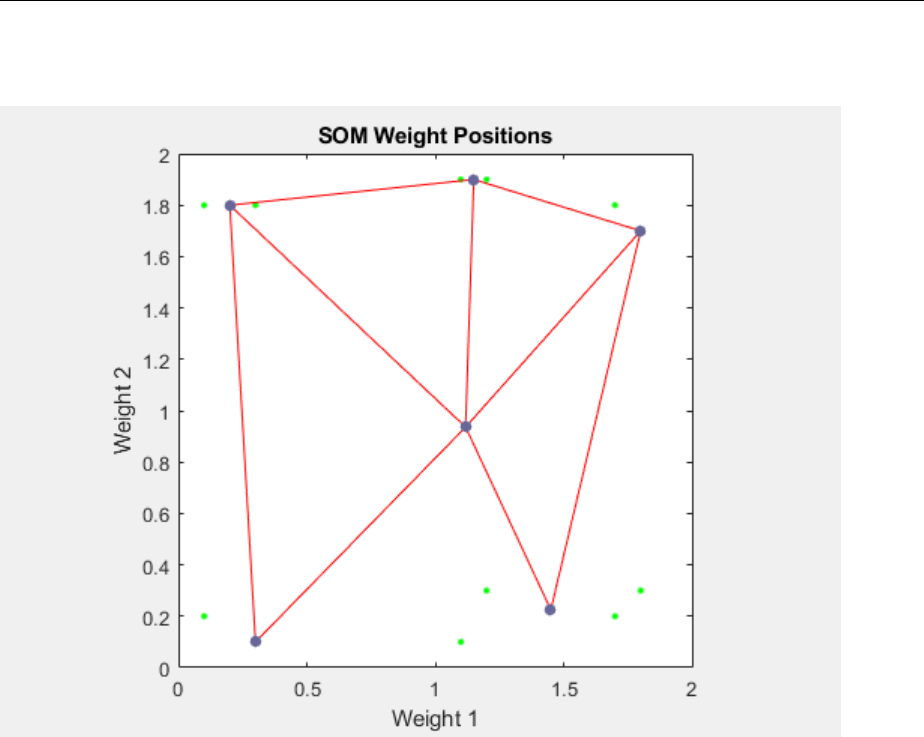

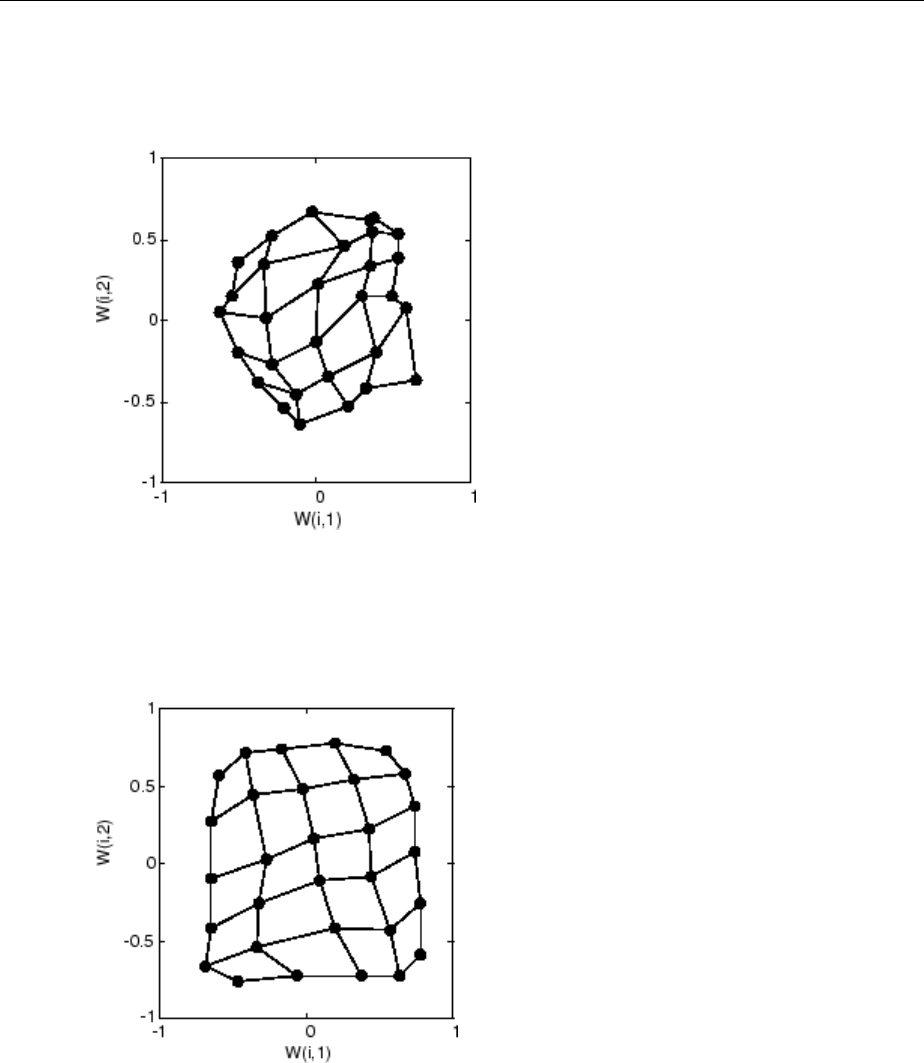

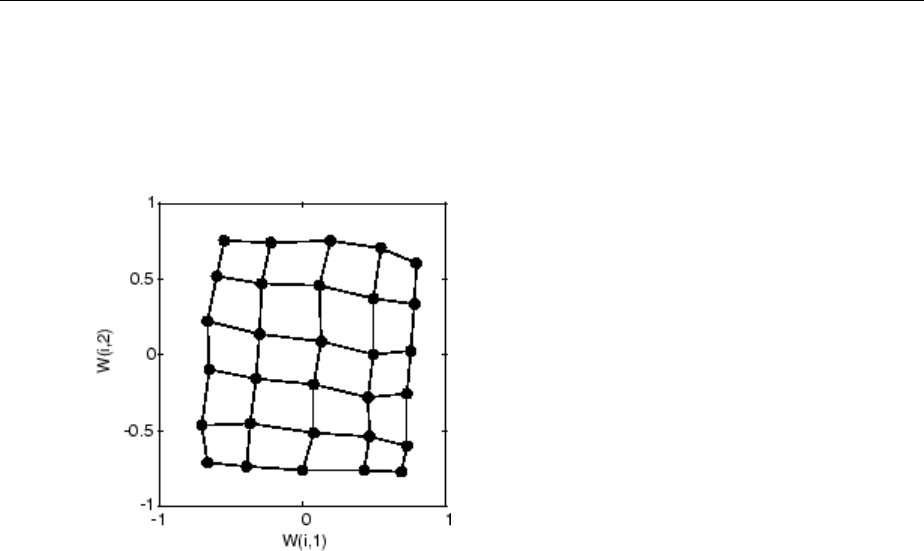

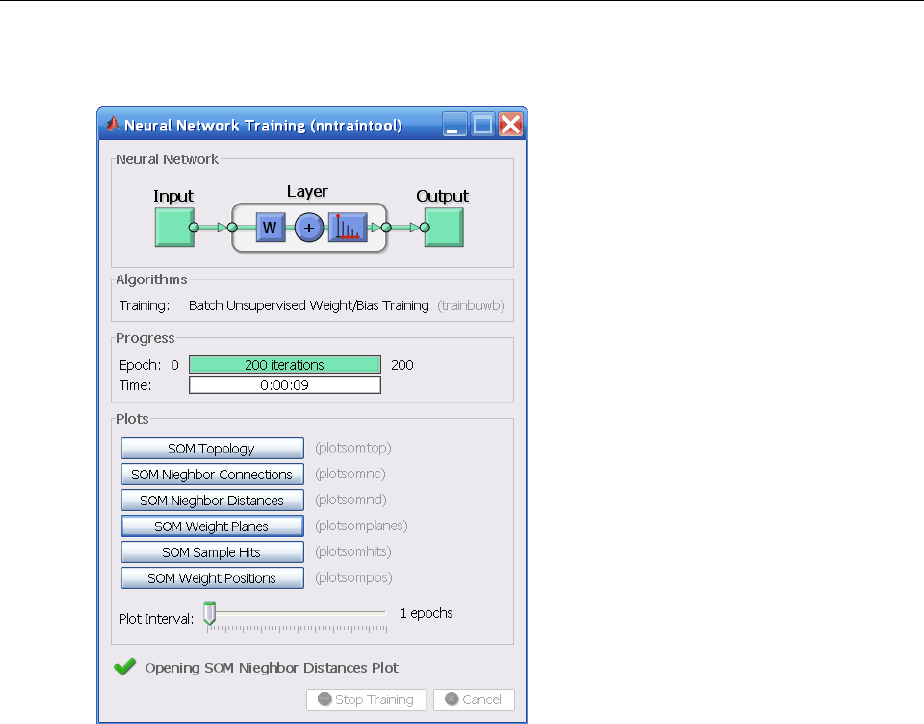

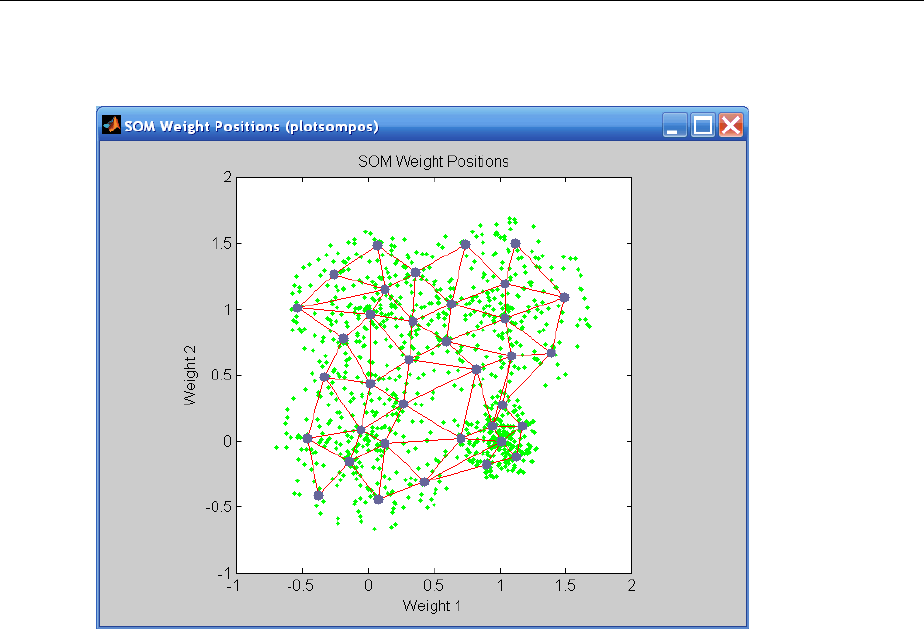

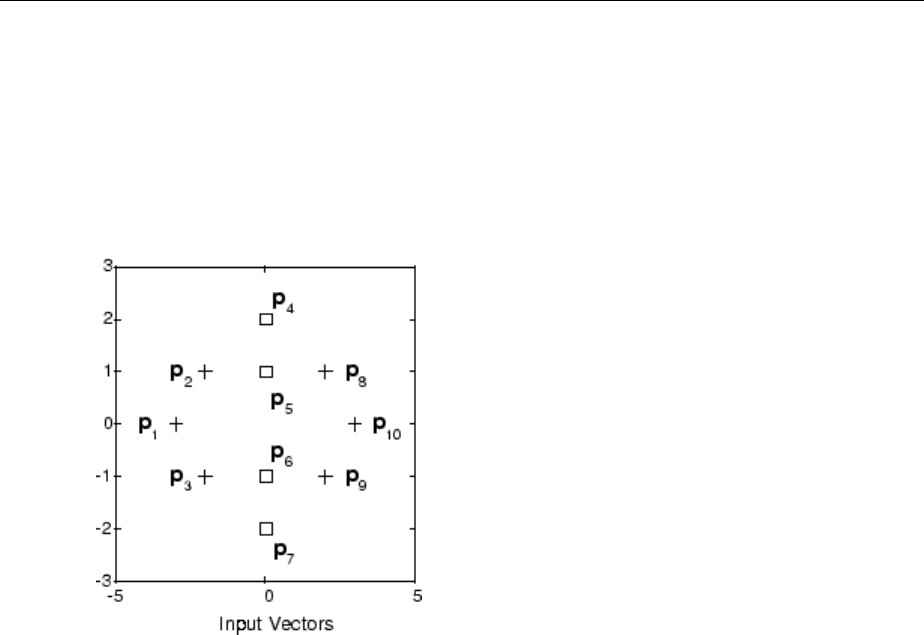

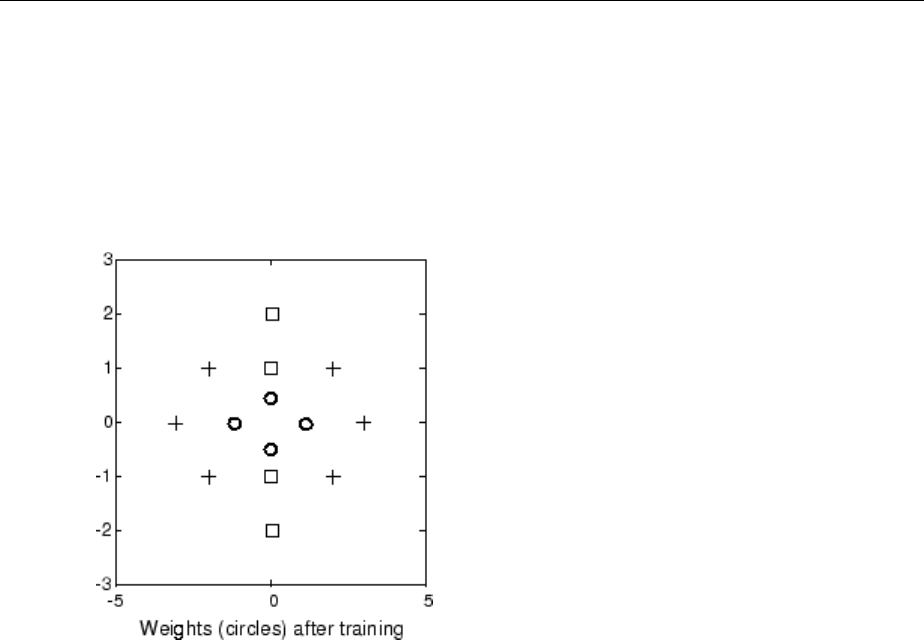

Graphical Example ................................. 8-8

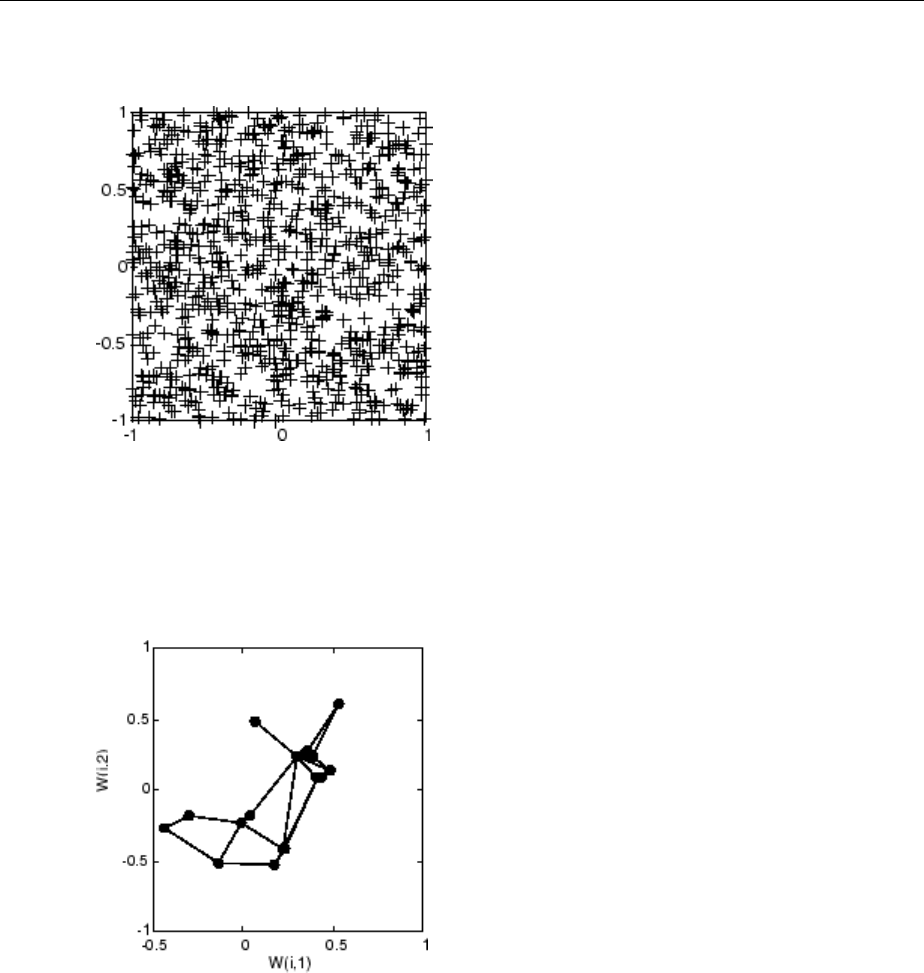

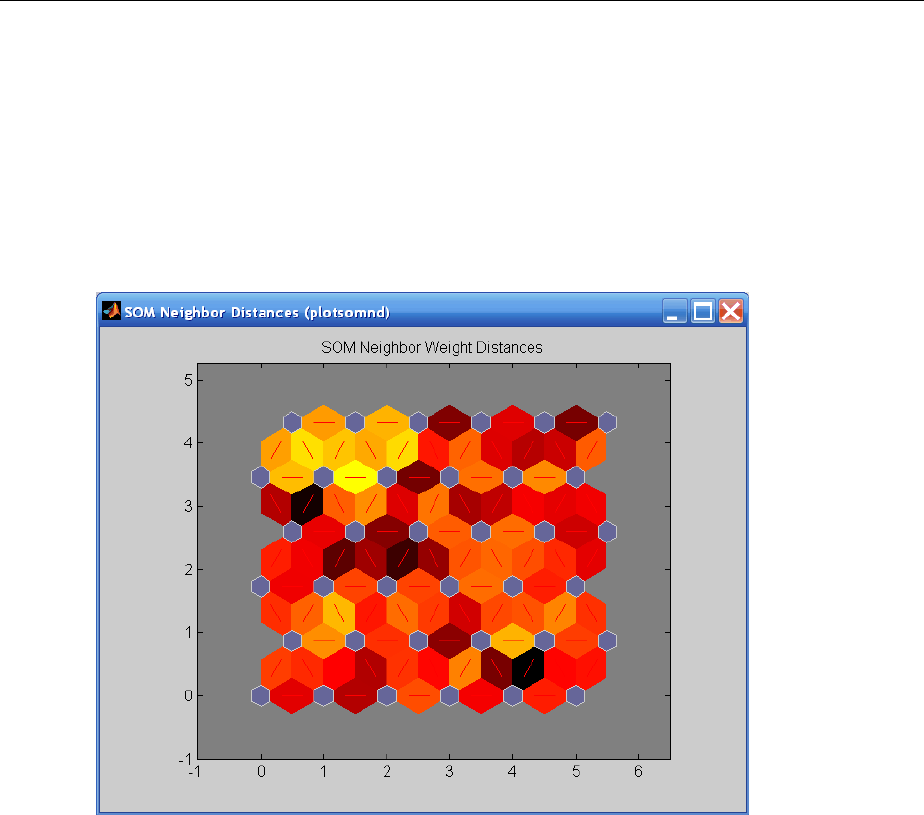

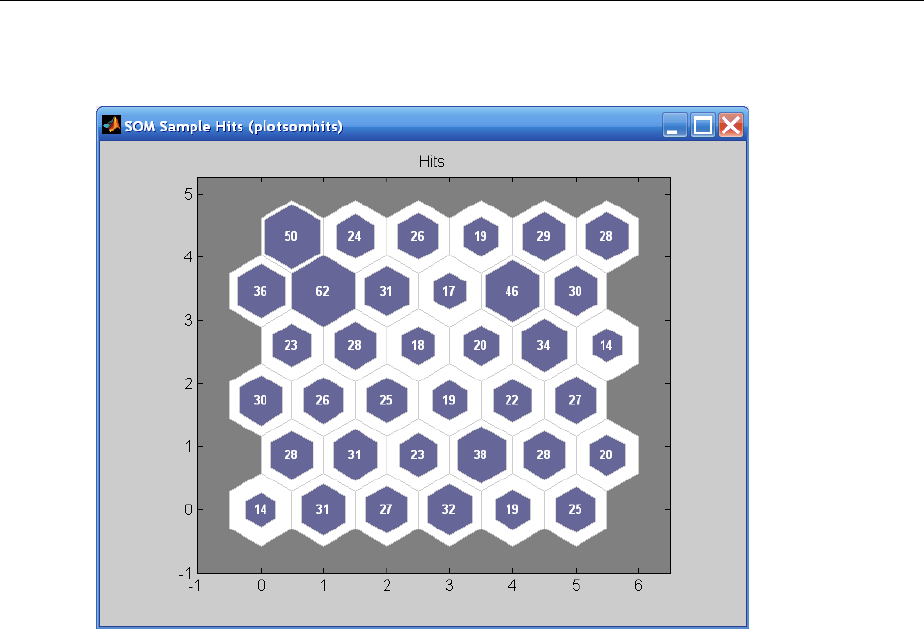

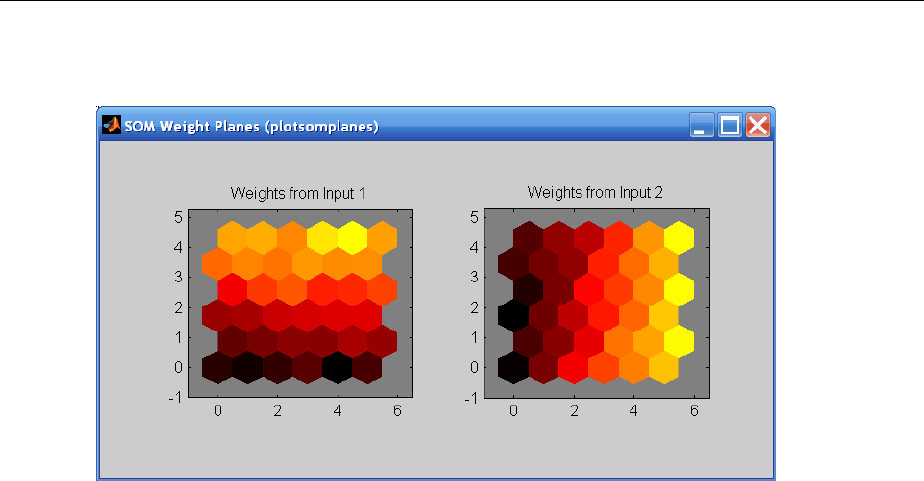

Cluster with Self-Organizing Map Neural Network ......... 8-9

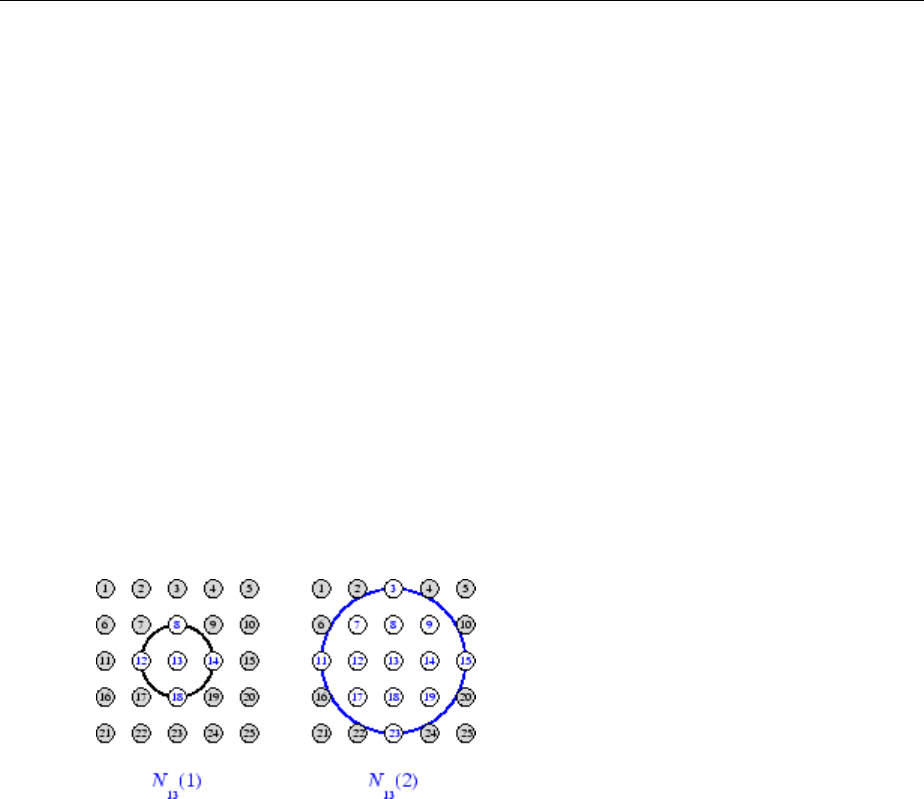

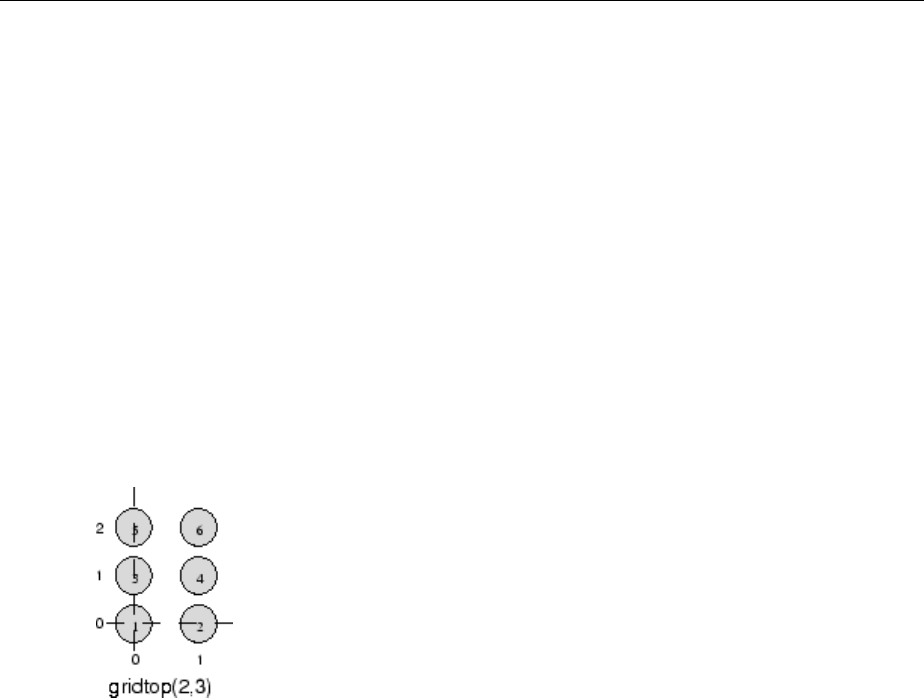

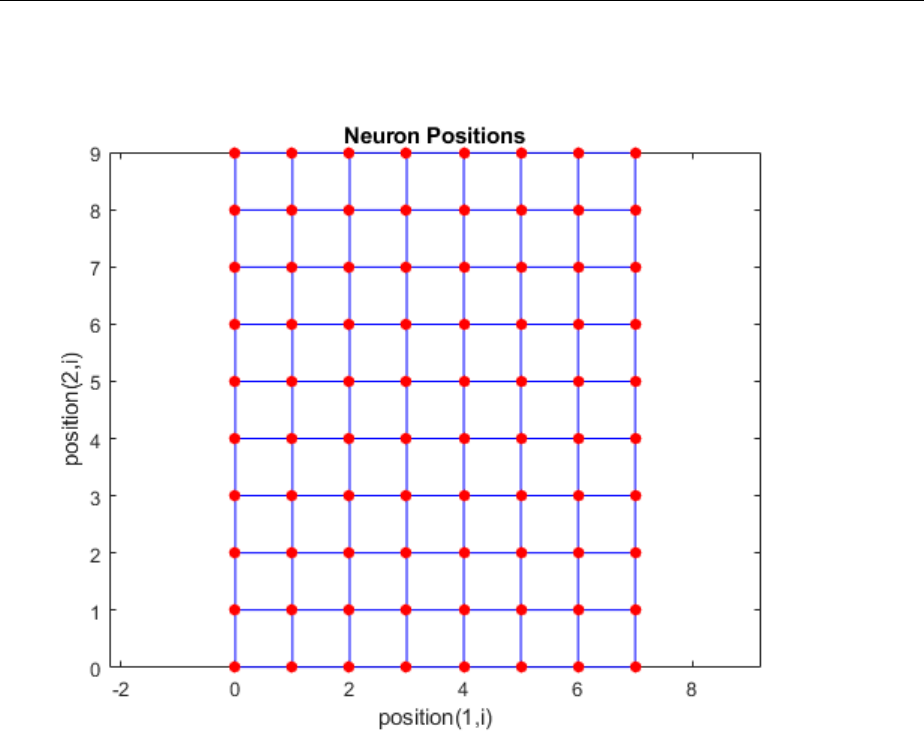

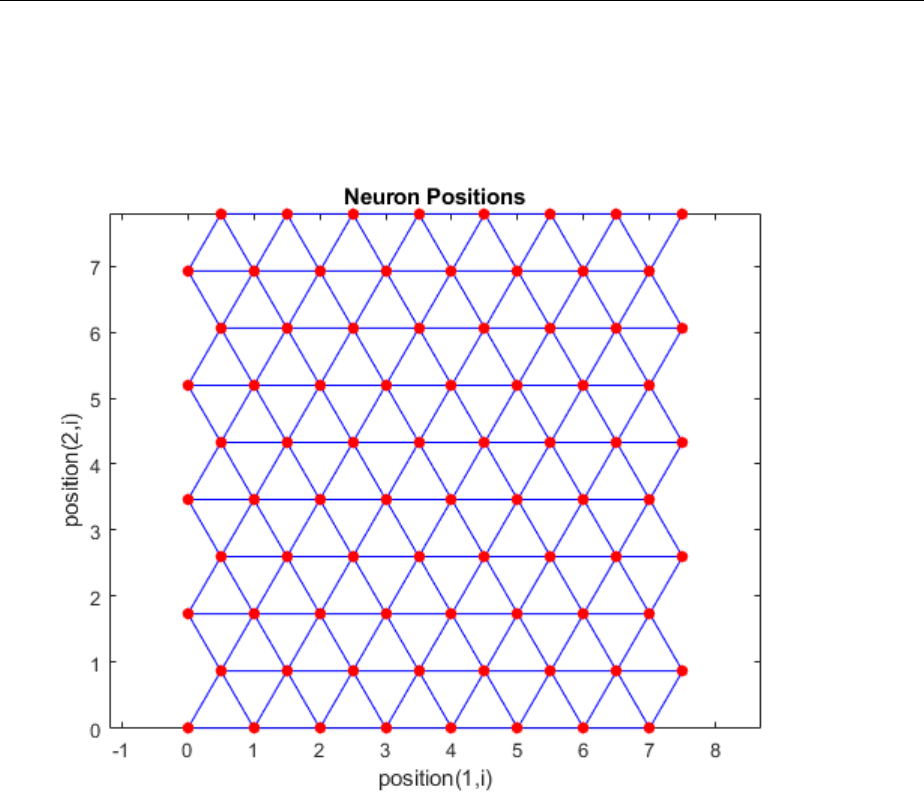

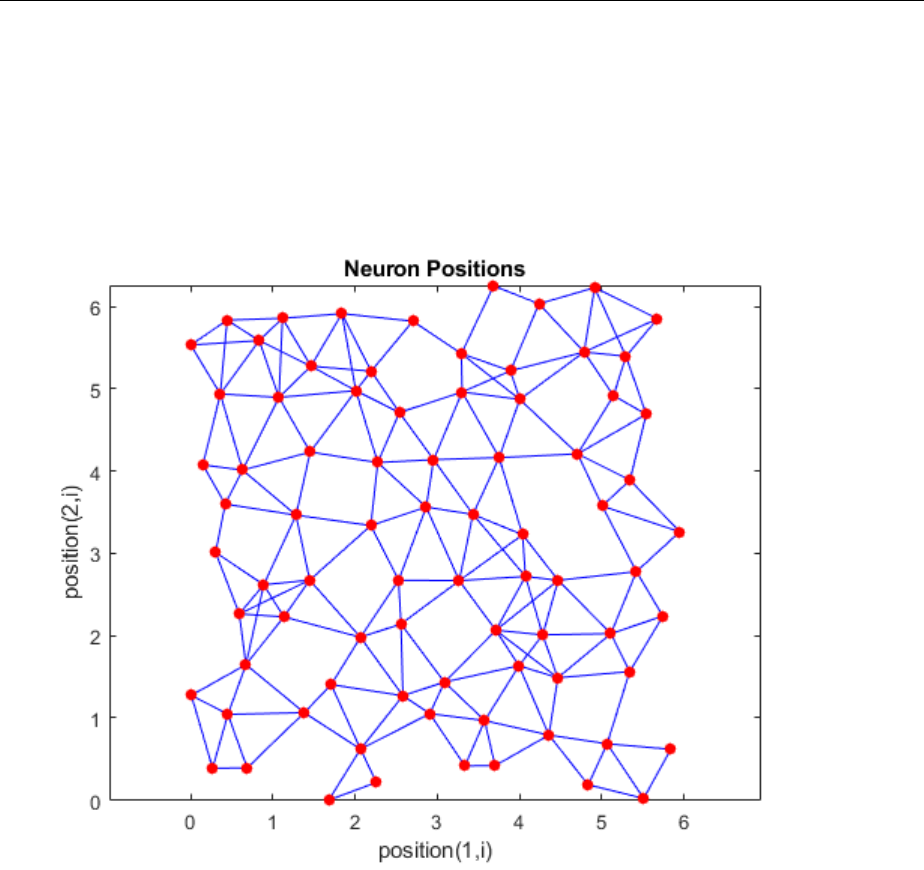

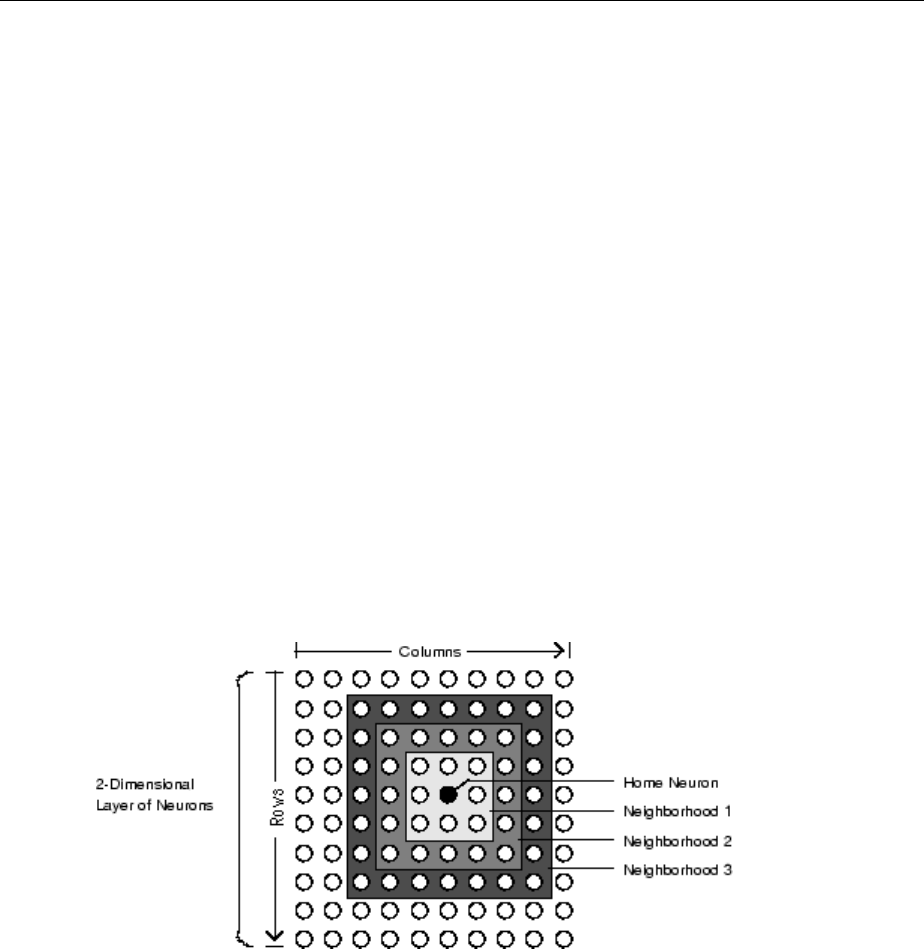

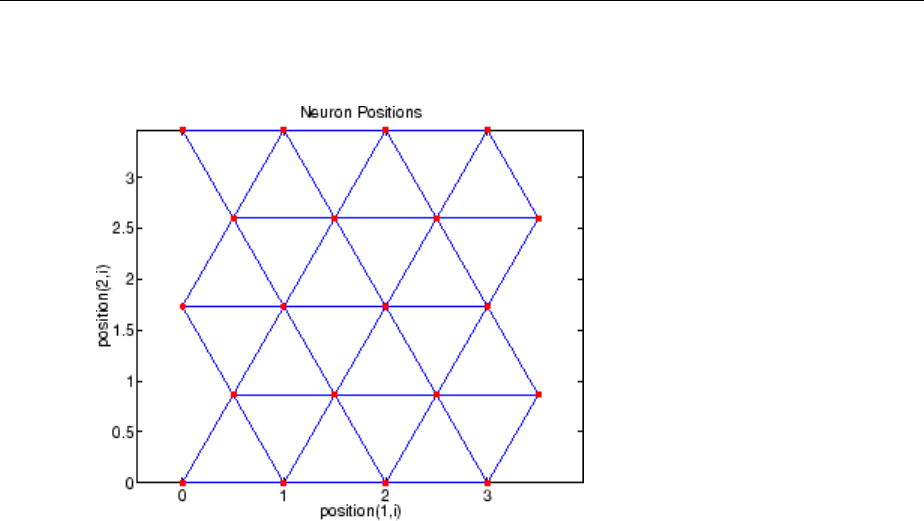

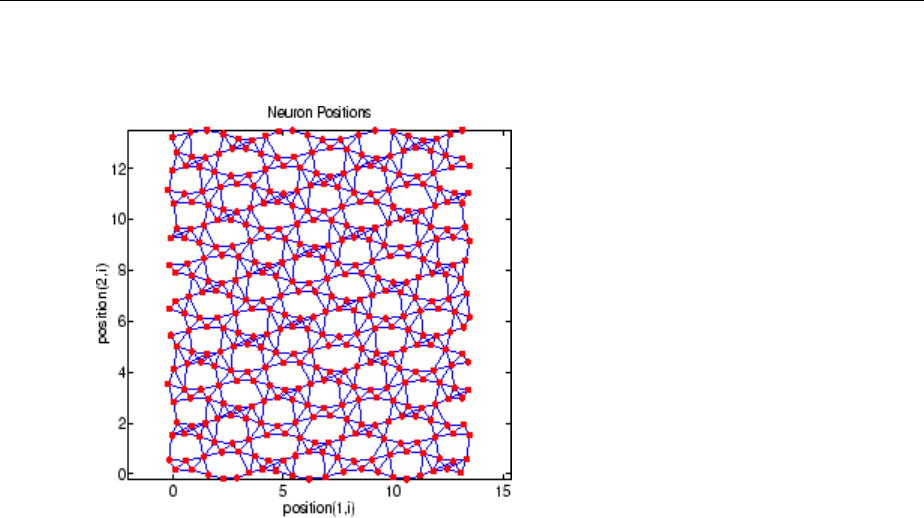

Topologies (gridtop, hextop, randtop) .................. 8-11

Distance Functions (dist, linkdist, mandist, boxdist) ....... 8-14

Architecture ..................................... 8-17

Create a Self-Organizing Map Neural Network

(selforgmap) ................................... 8-18

Training (learnsomb) .............................. 8-19

Examples ....................................... 8-22

xii Contents

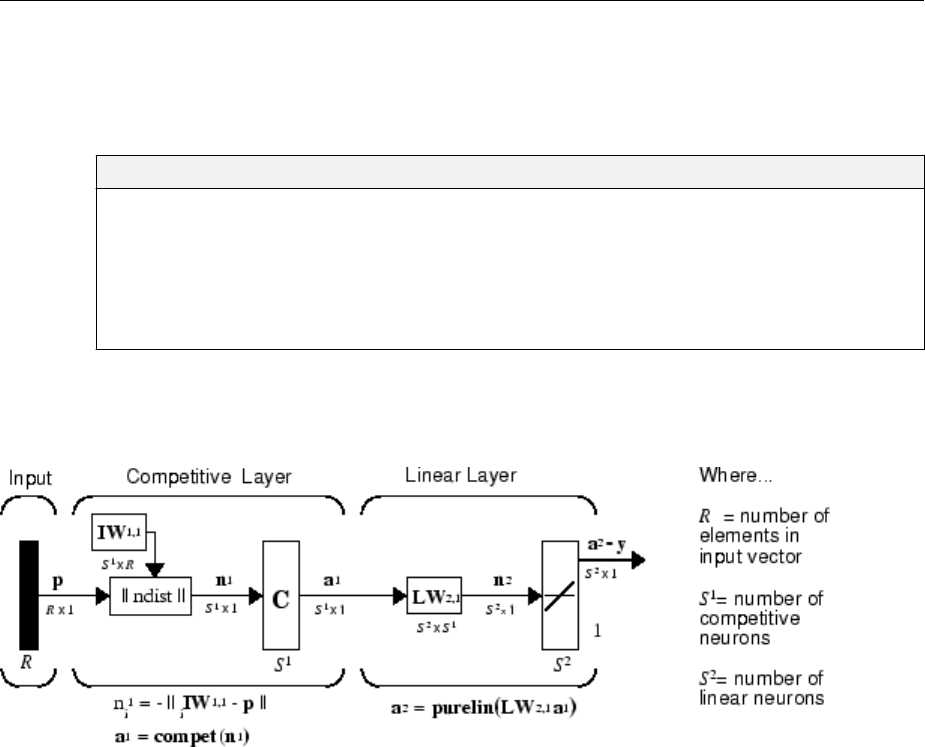

Learning Vector Quantization (LVQ) Neural Networks ..... 8-34

Architecture ..................................... 8-34

Creating an LVQ Network ........................... 8-35

LVQ1 Learning Rule (learnlv1) ....................... 8-38

Training ........................................ 8-39

Supplemental LVQ2.1 Learning Rule (learnlv2) ........... 8-41

Adaptive Filters and Adaptive Training

9

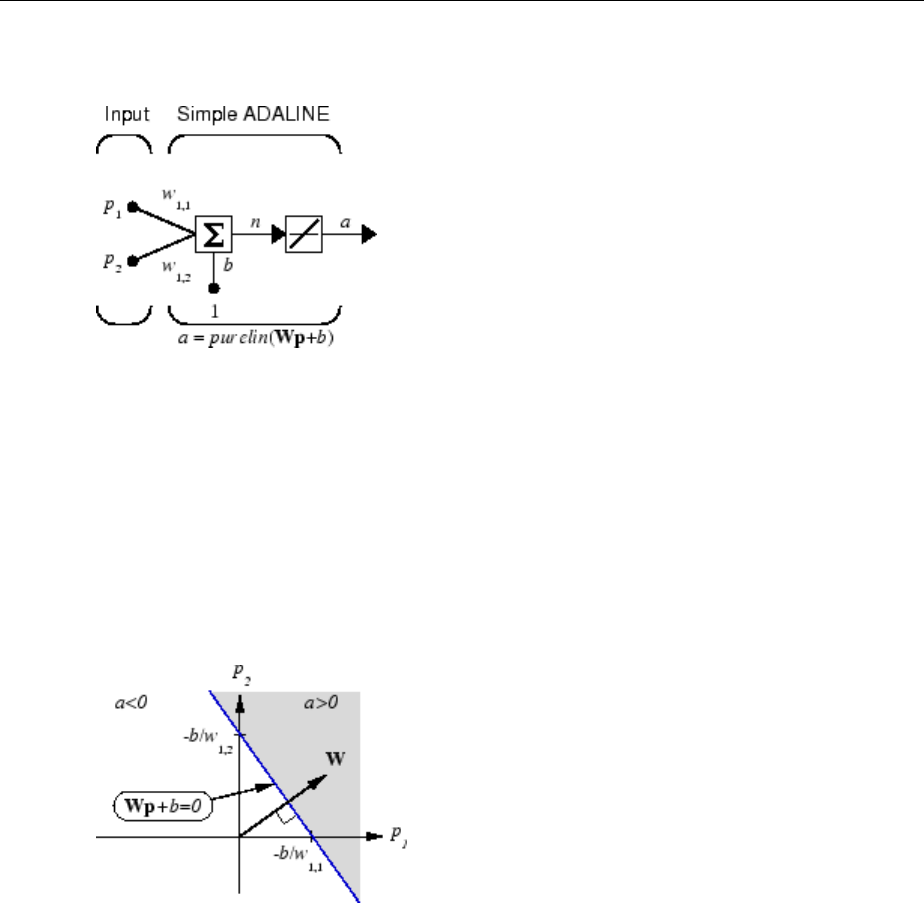

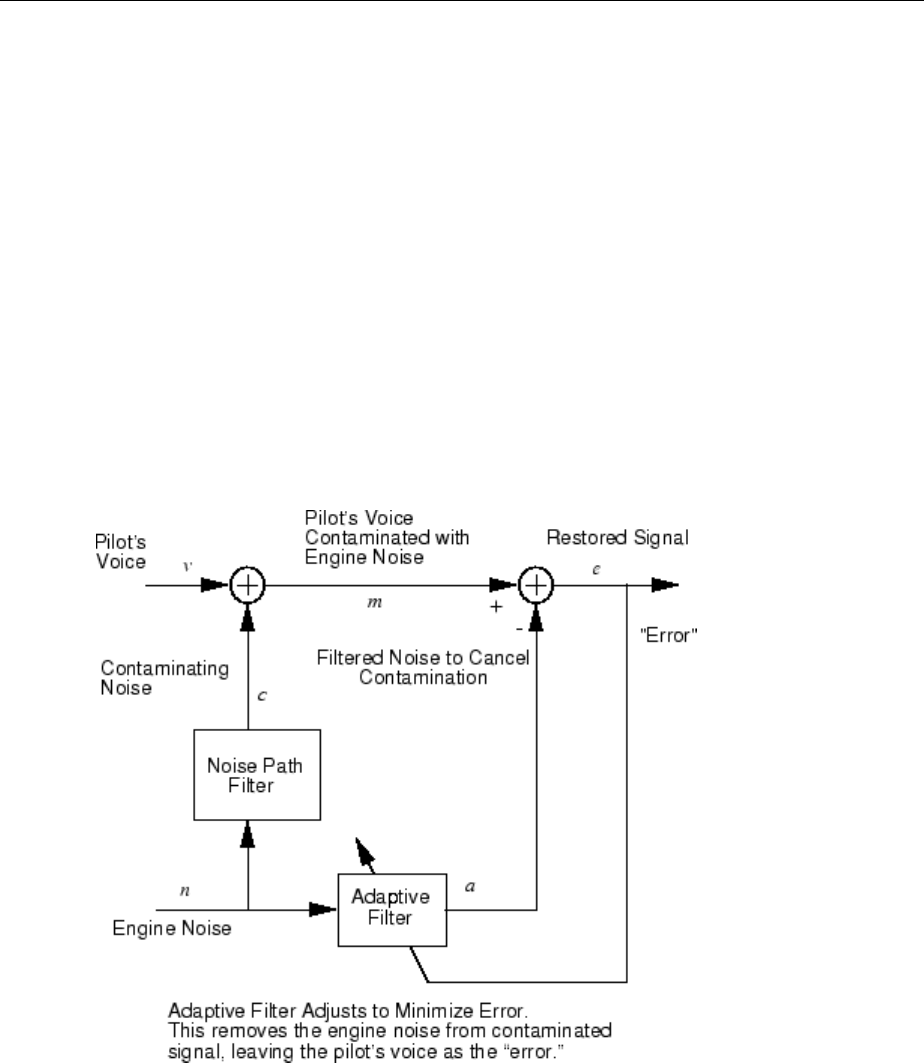

Adaptive Neural Network Filters ........................ 9-2

Adaptive Functions ................................. 9-2

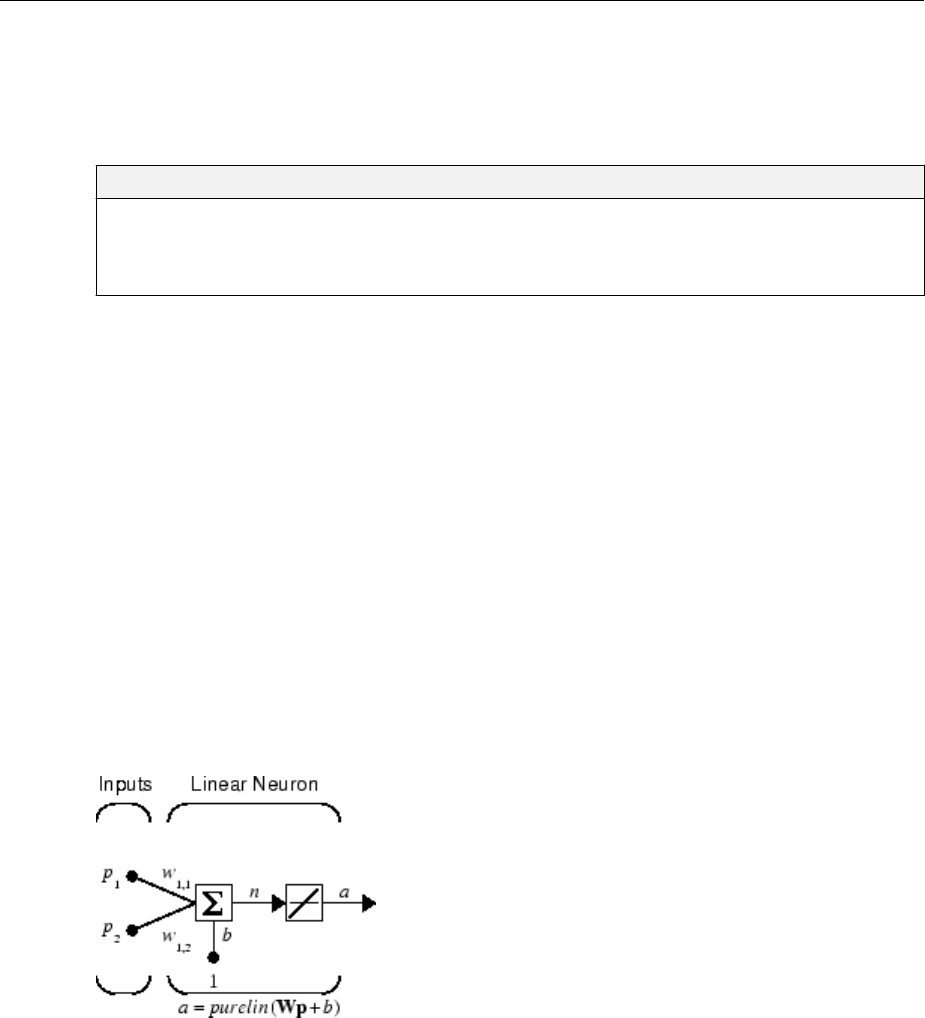

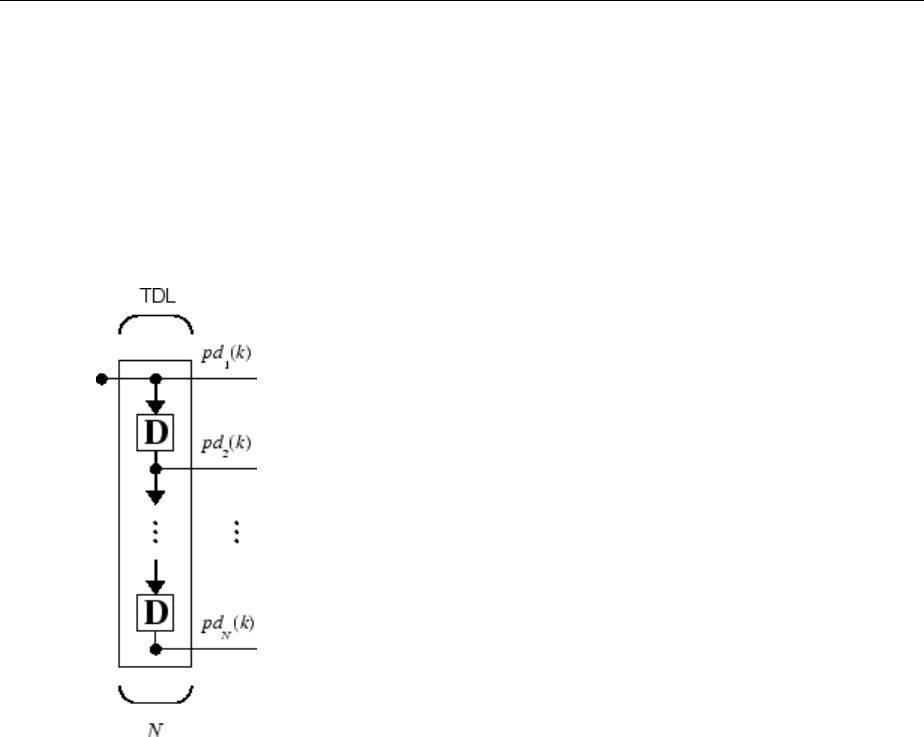

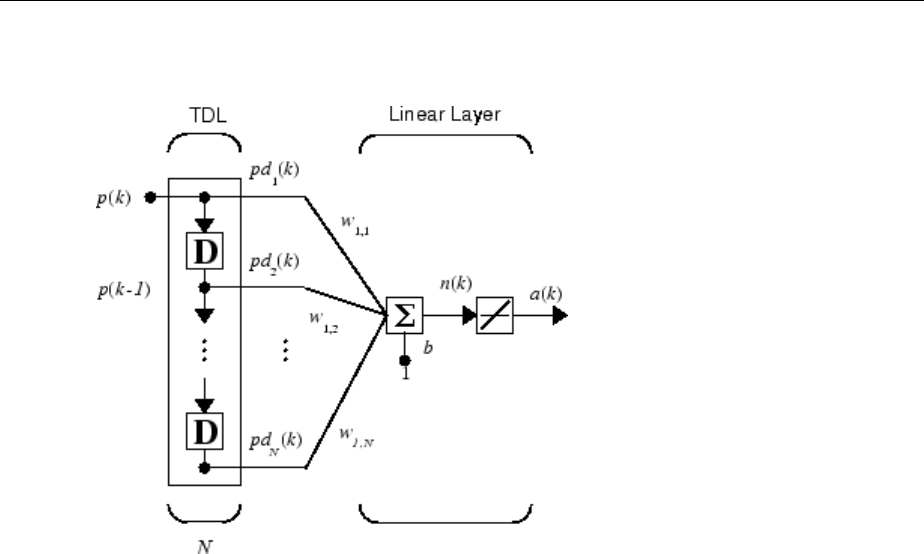

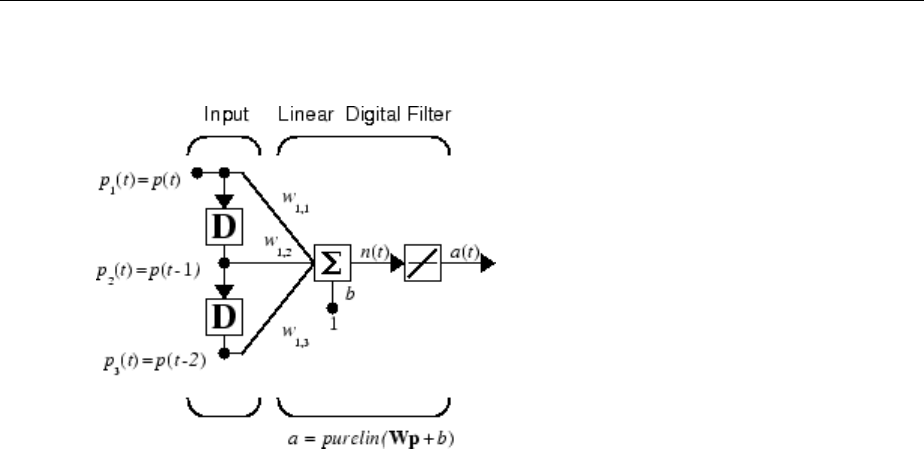

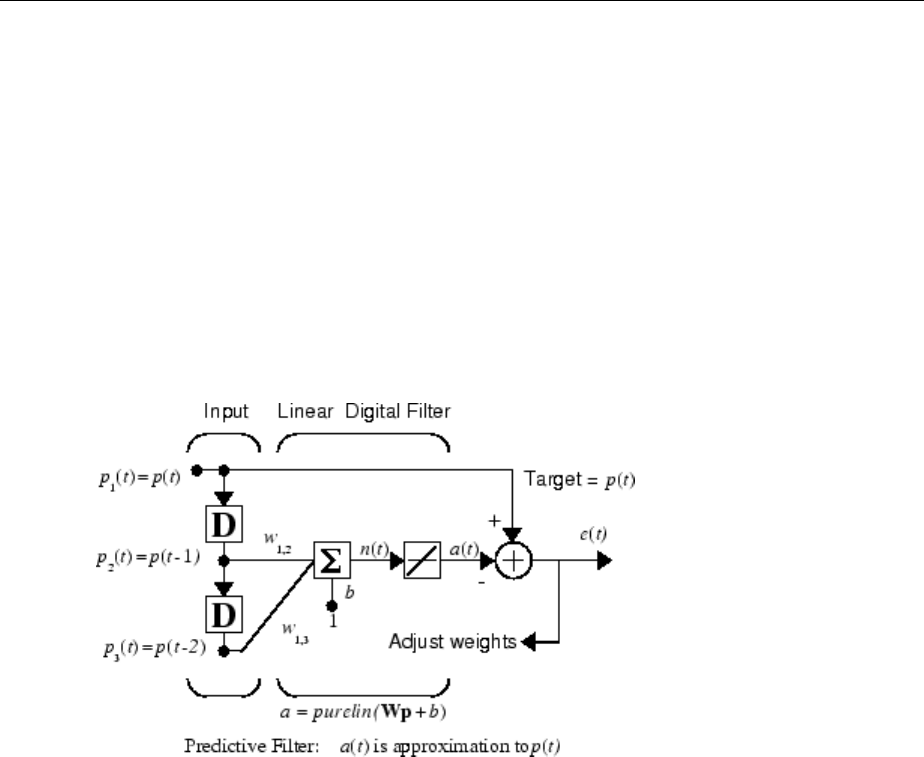

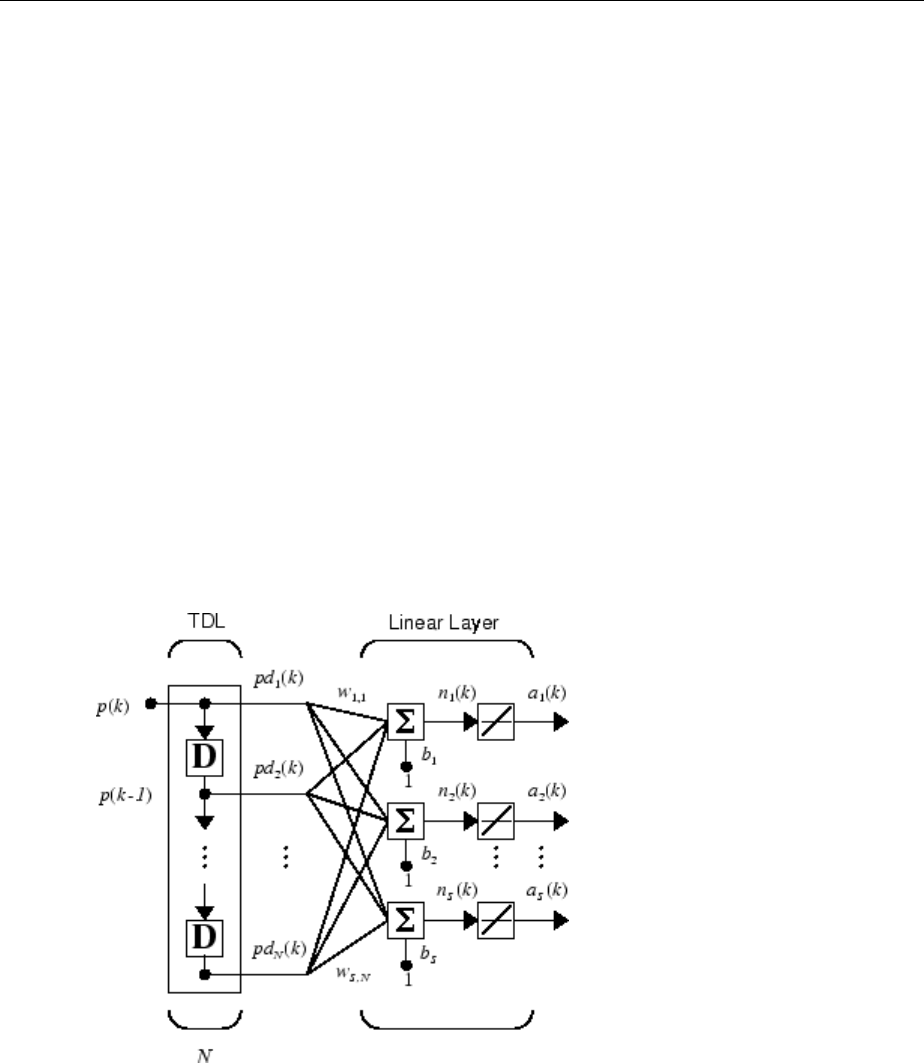

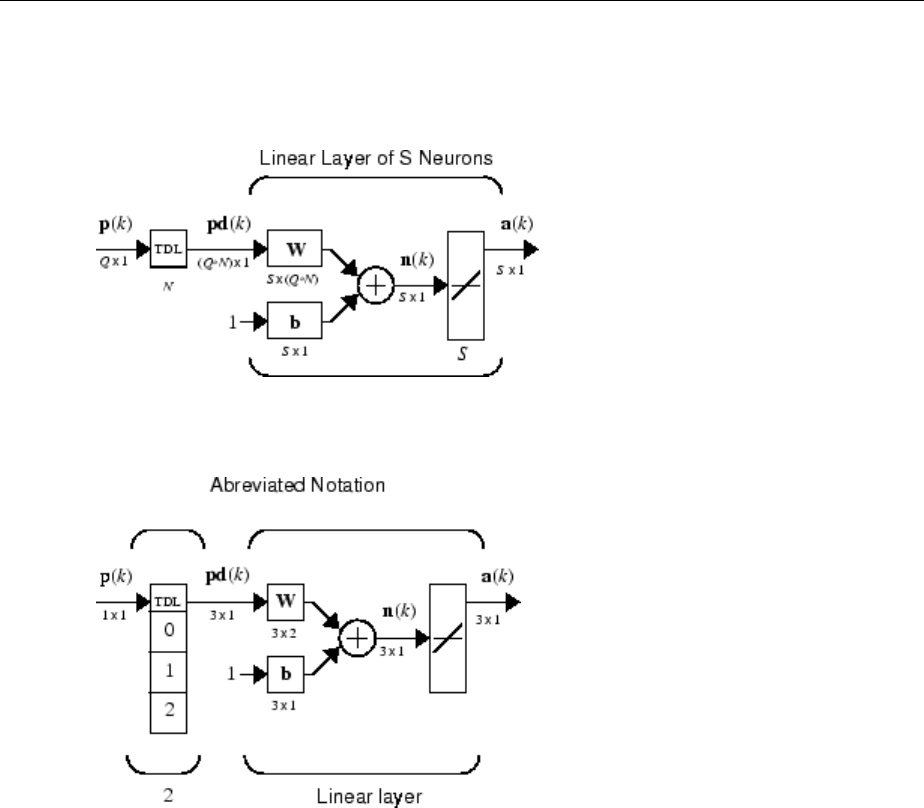

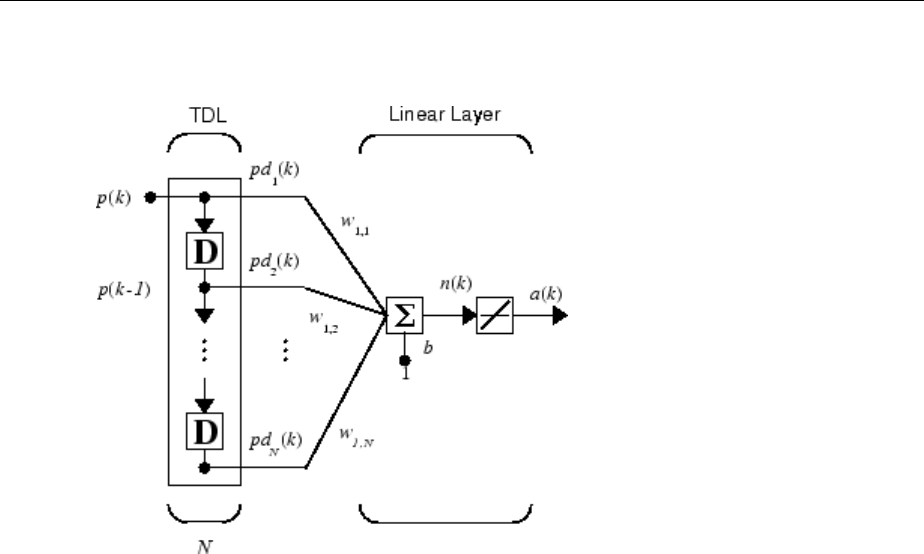

Linear Neuron Model ............................... 9-3

Adaptive Linear Network Architecture .................. 9-4

Least Mean Square Error ............................ 9-6

LMS Algorithm (learnwh) ............................ 9-7

Adaptive Filtering (adapt) ............................ 9-7

Advanced Topics

10

Neural Networks with Parallel and GPU Computing ....... 10-2

Deep Learning ................................... 10-2

Modes of Parallelism .............................. 10-2

Distributed Computing ............................. 10-3

Single GPU Computing ............................. 10-5

Distributed GPU Computing ......................... 10-8

Parallel Time Series .............................. 10-10

Parallel Availability, Fallbacks, and Feedback ........... 10-10

Optimize Neural Network Training Speed and Memory .... 10-12

Memory Reduction ............................... 10-12

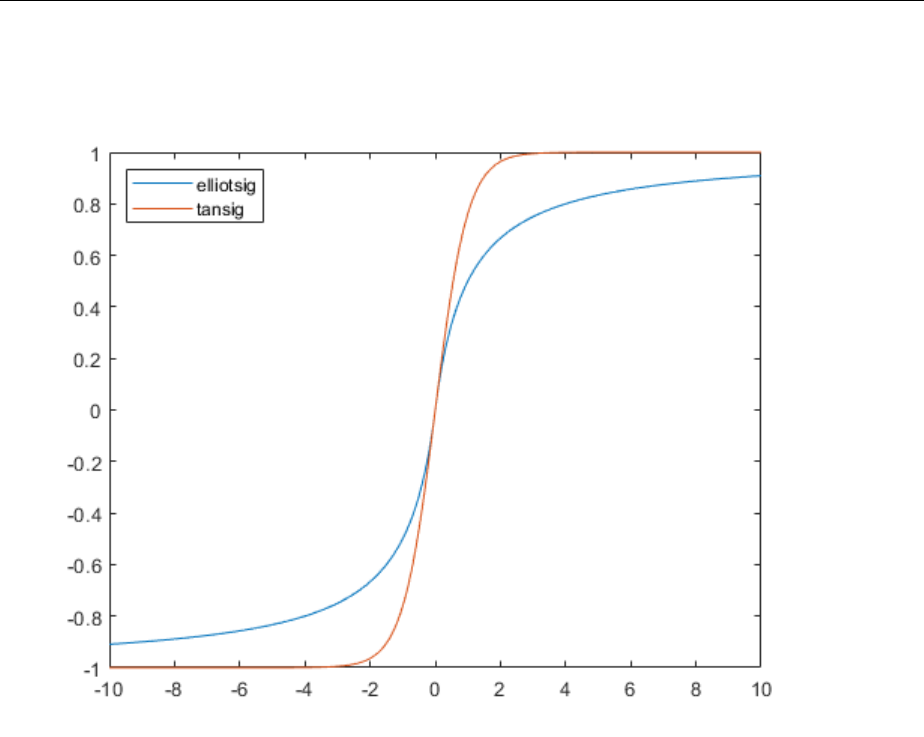

Fast Elliot Sigmoid ............................... 10-12

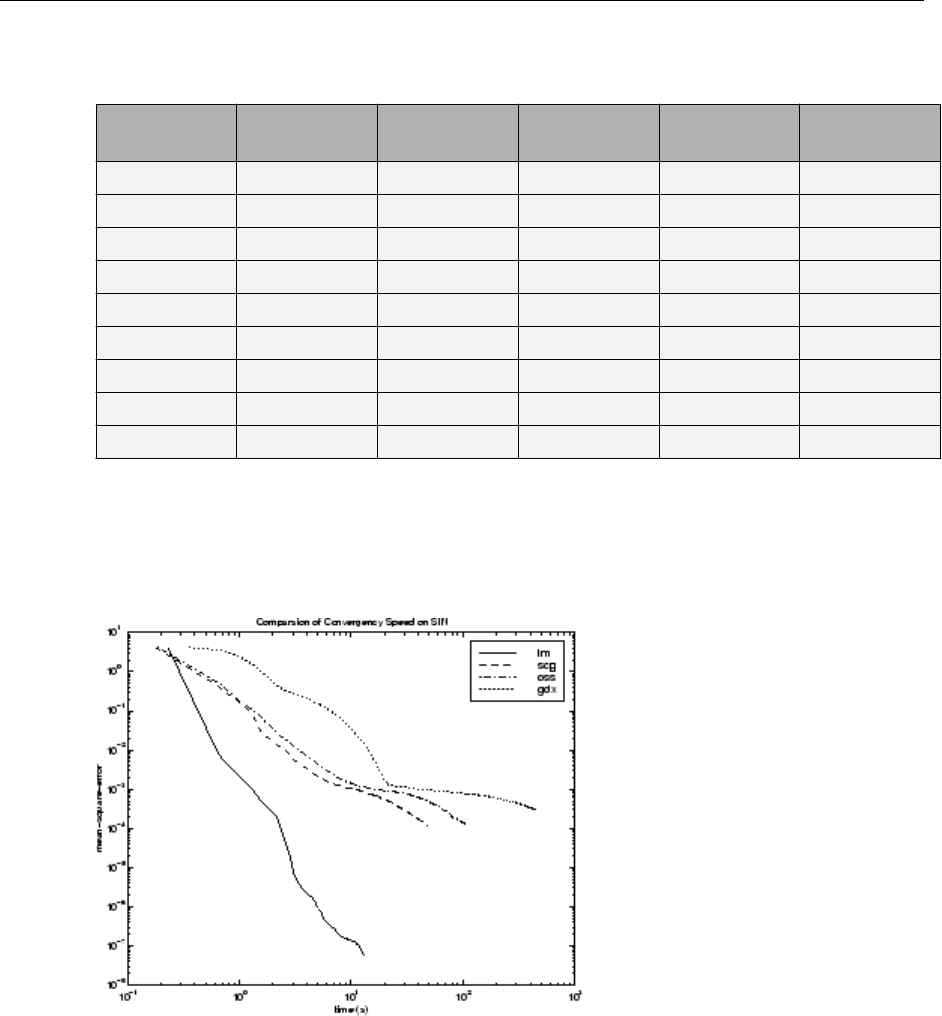

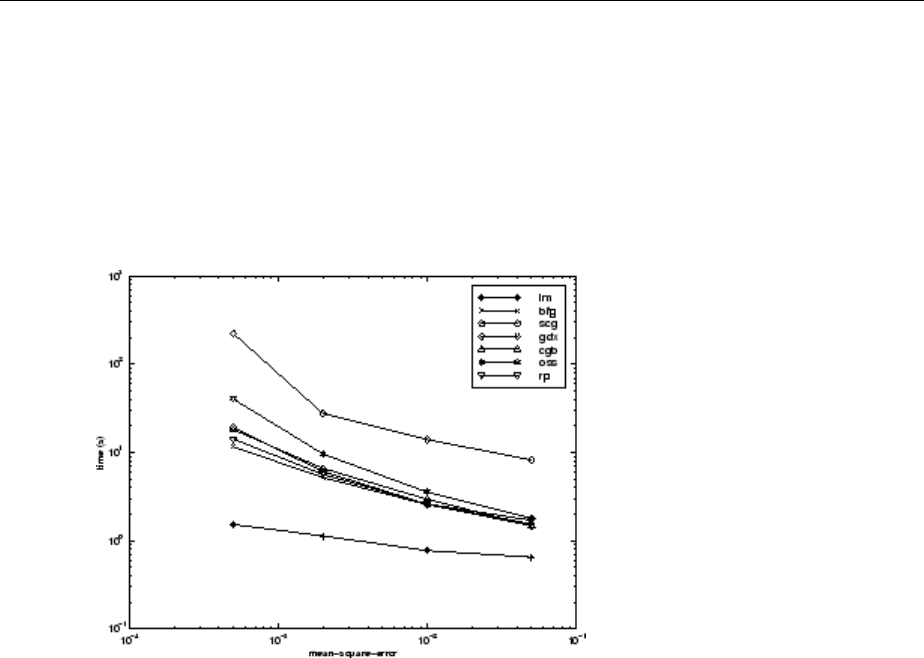

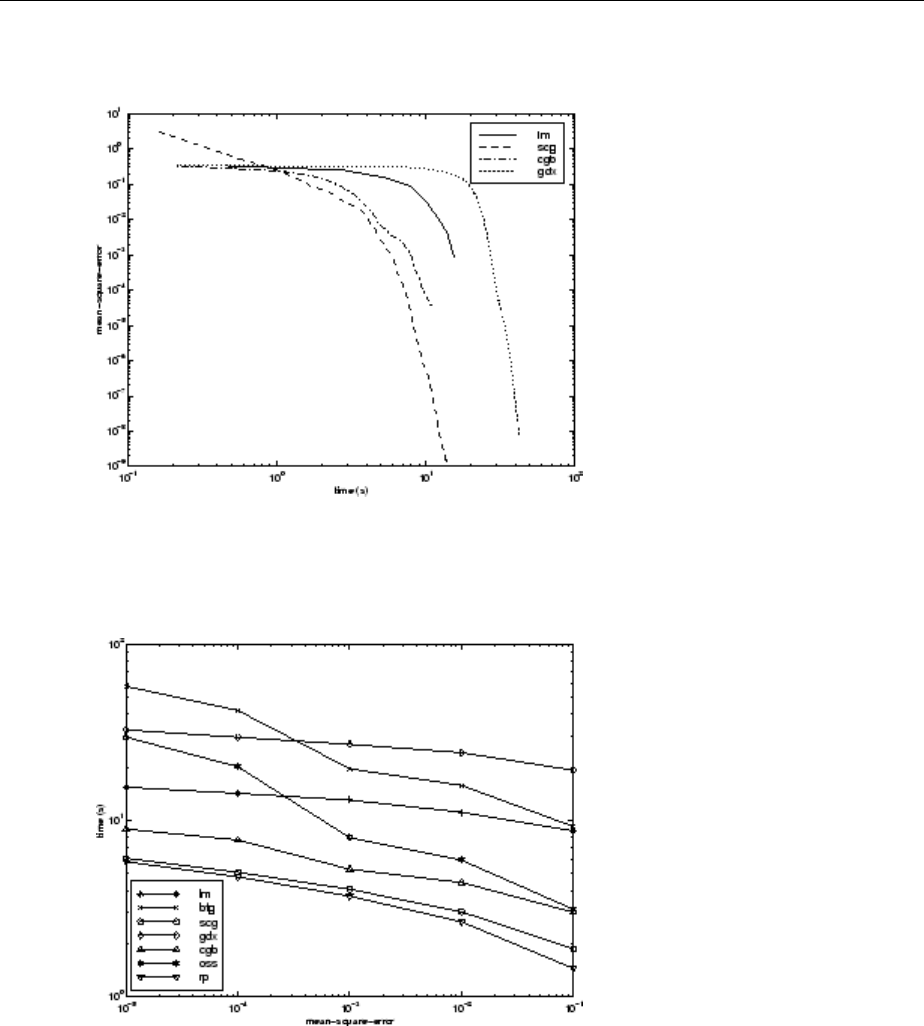

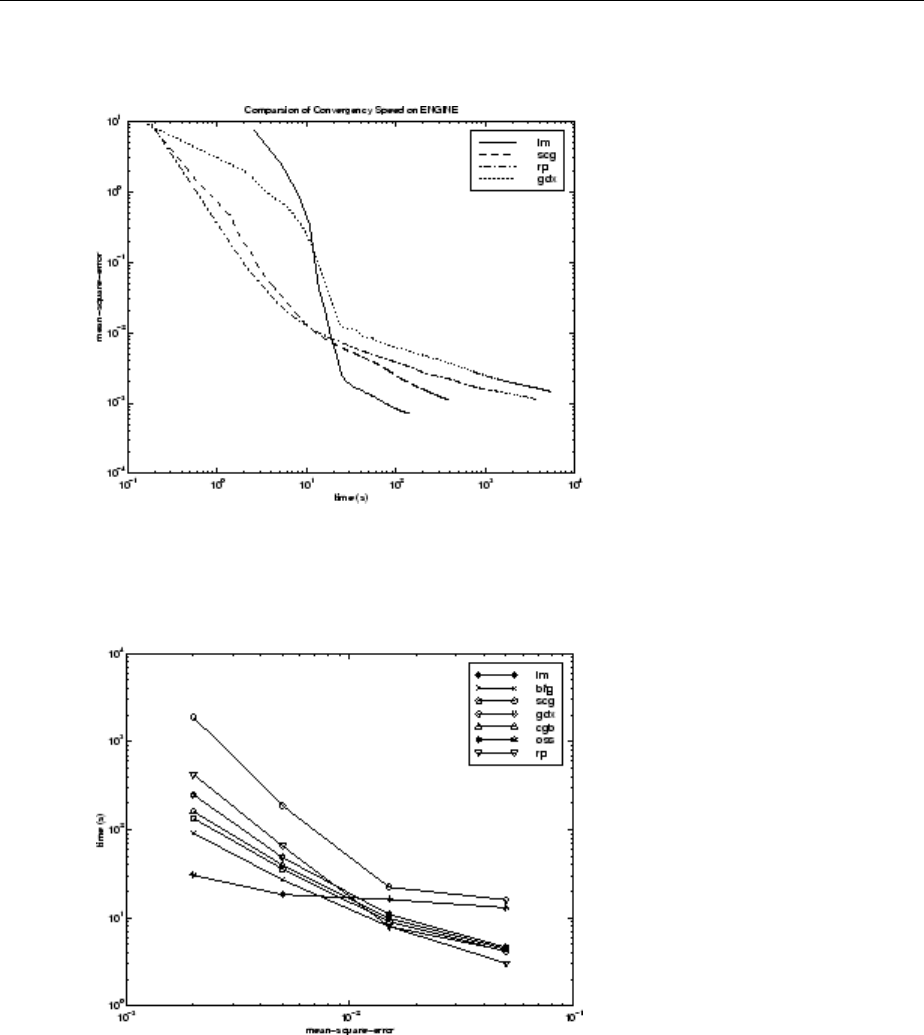

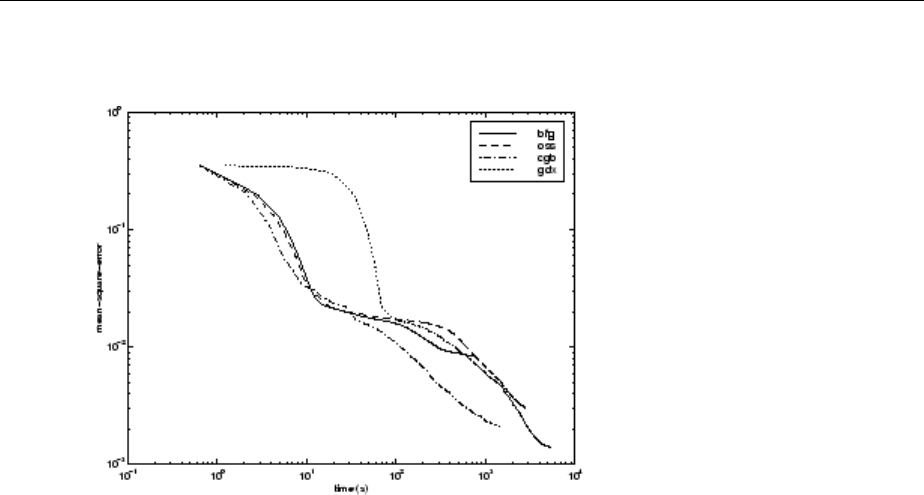

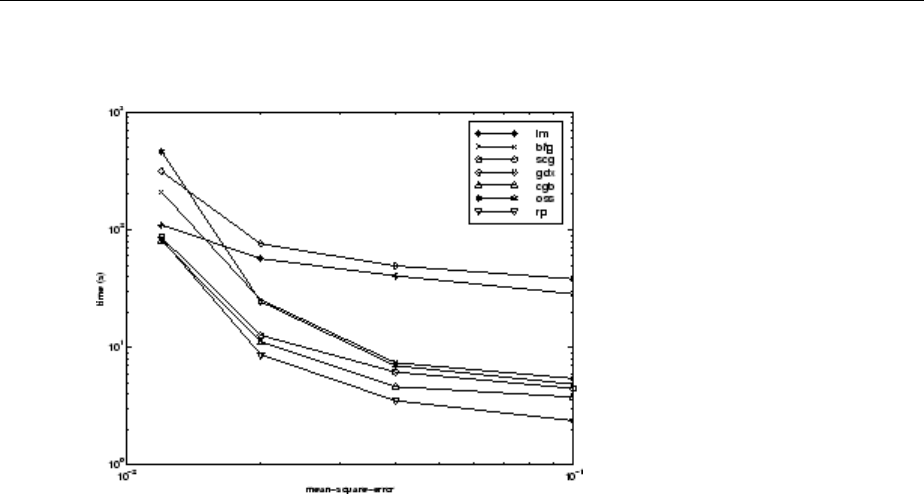

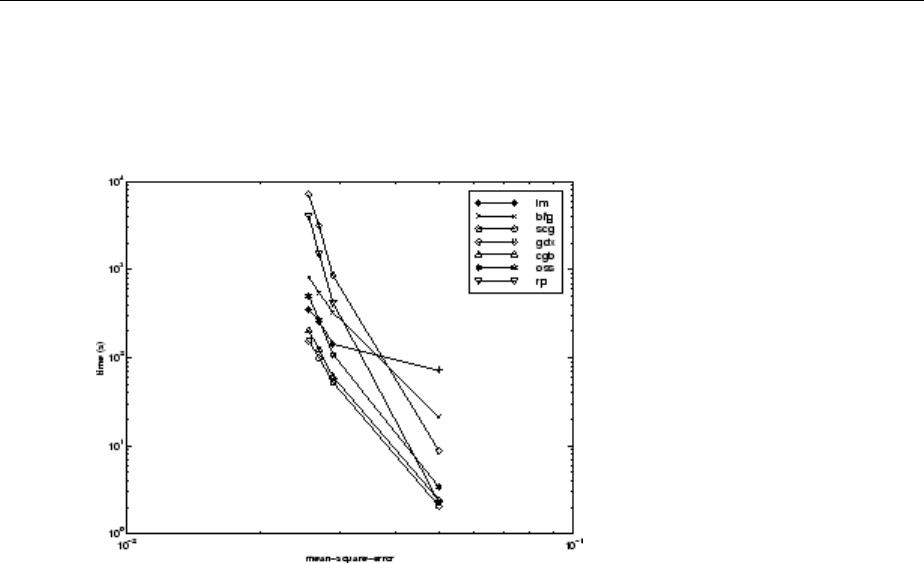

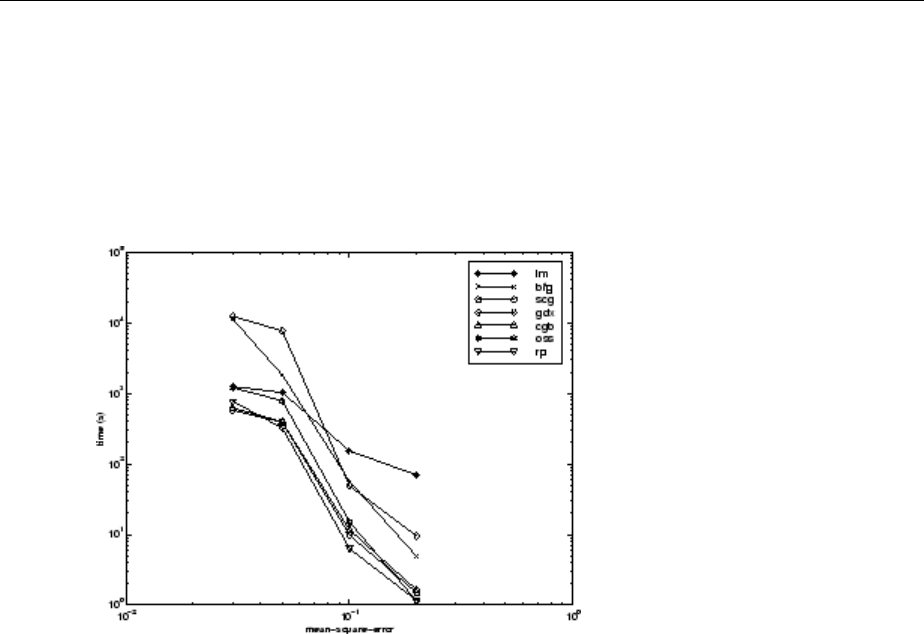

Choose a Multilayer Neural Network Training Function ... 10-16

SIN Data Set ................................... 10-17

PARITY Data Set ................................. 10-19

xiii

ENGINE Data Set ................................ 10-22

CANCER Data Set ............................... 10-24

CHOLESTEROL Data Set .......................... 10-26

DIABETES Data Set .............................. 10-28

Summary ...................................... 10-30

Improve Neural Network Generalization and Avoid Overttin

g.............................................. 10-32

Retraining Neural Networks ........................ 10-34

Multiple Neural Networks ......................... 10-35

Early Stopping .................................. 10-36

Index Data Division (divideind) ...................... 10-37

Random Data Division (dividerand) ................... 10-37

Block Data Division (divideblock) .................... 10-37

Interleaved Data Division (divideint) .................. 10-38

Regularization .................................. 10-38

Summary and Discussion of Early Stopping and

Regularization ................................ 10-41

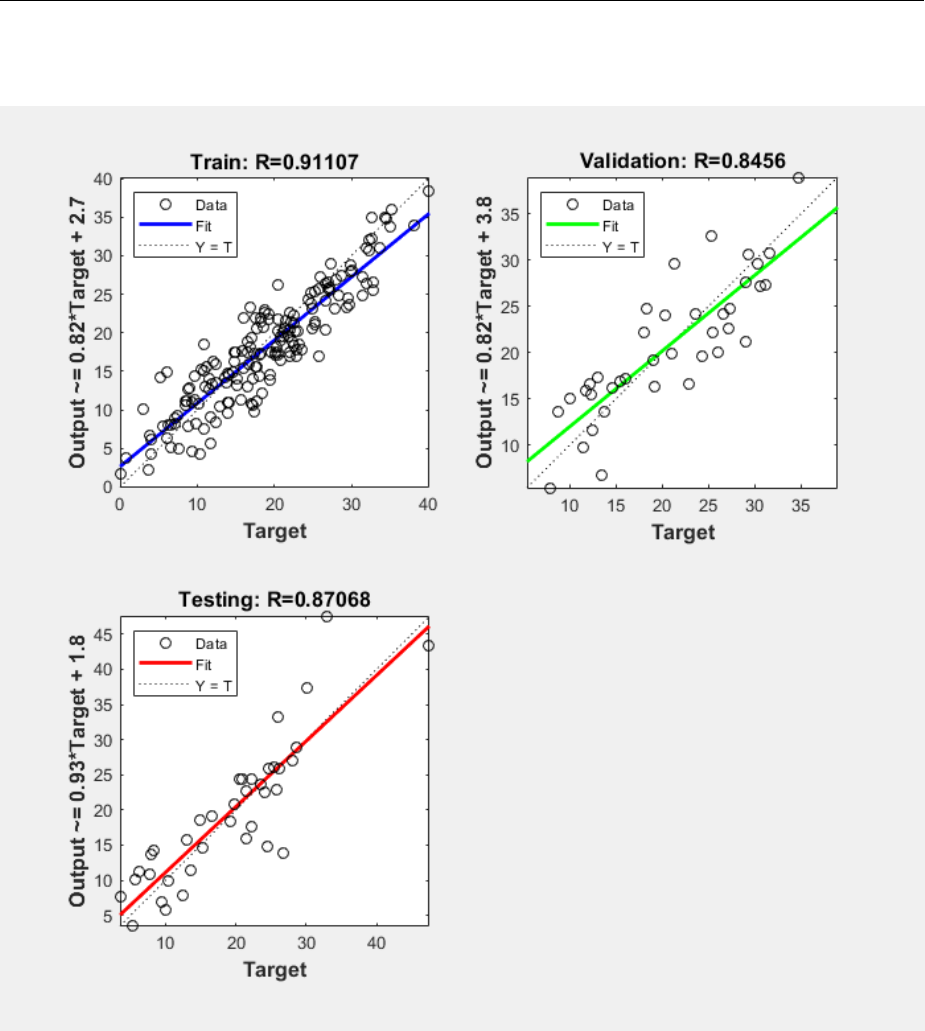

Posttraining Analysis (regression) .................... 10-43

Edit Shallow Neural Network Properties ................ 10-46

Custom Network ................................ 10-46

Network Denition ............................... 10-47

Network Behavior ............................... 10-57

Custom Neural Network Helper Functions .............. 10-60

Automatically Save Checkpoints During Neural Network

Training ........................................ 10-61

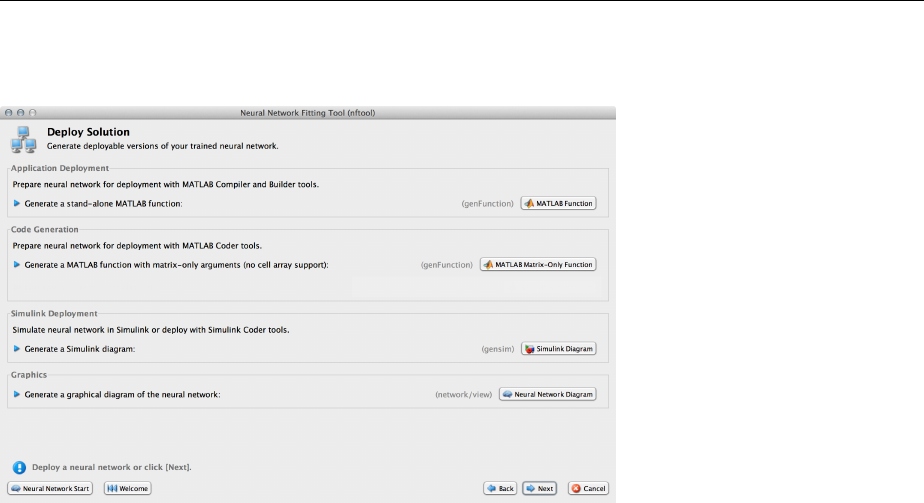

Deploy Trained Neural Network Functions .............. 10-63

Deployment Functions and Tools for Trained Networks .... 10-63

Generate Neural Network Functions for Application

Deployment .................................. 10-64

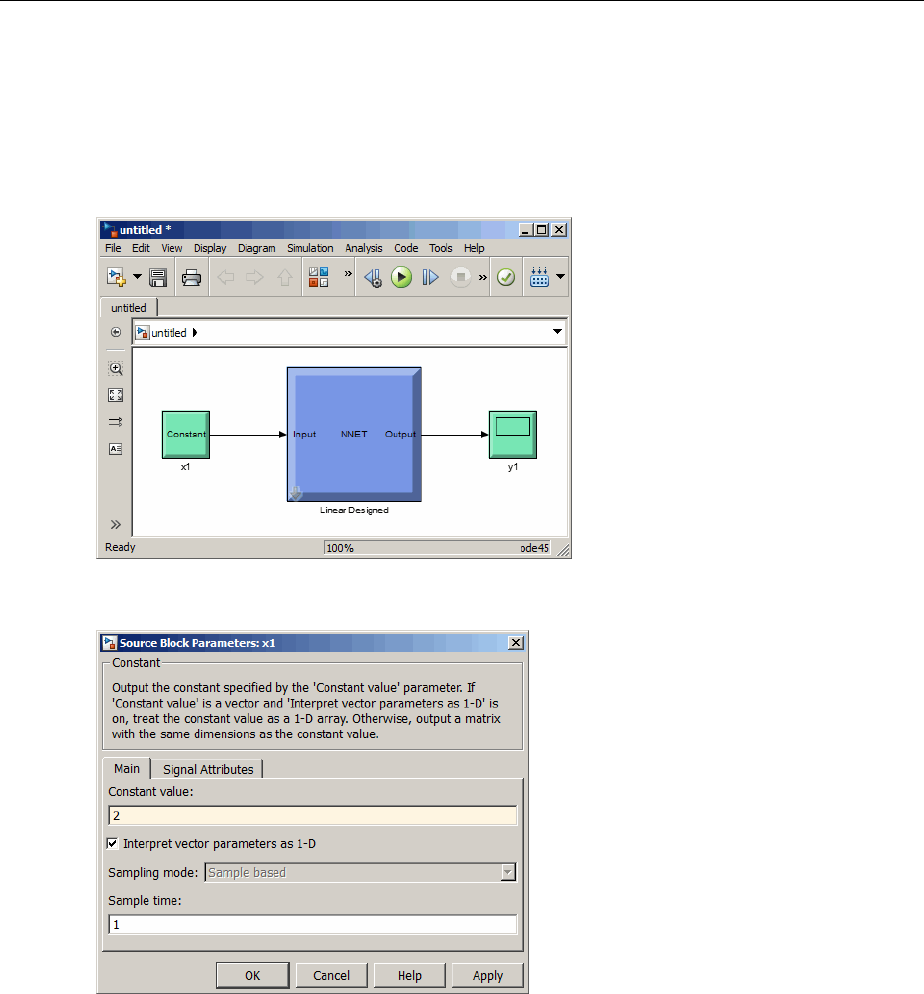

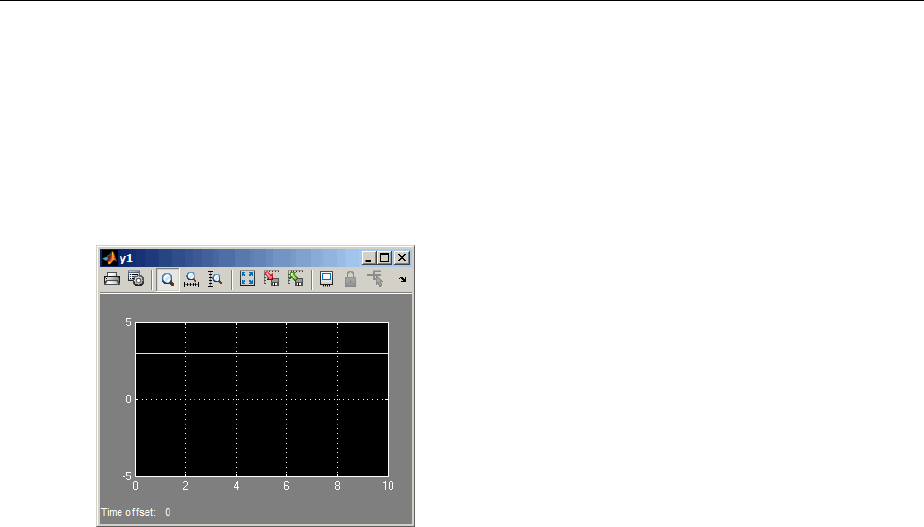

Generate Simulink Diagrams ....................... 10-67

Deploy Training of Neural Networks ................... 10-68

xiv Contents

Historical Neural Networks

11

Historical Neural Networks Overview ................... 11-2

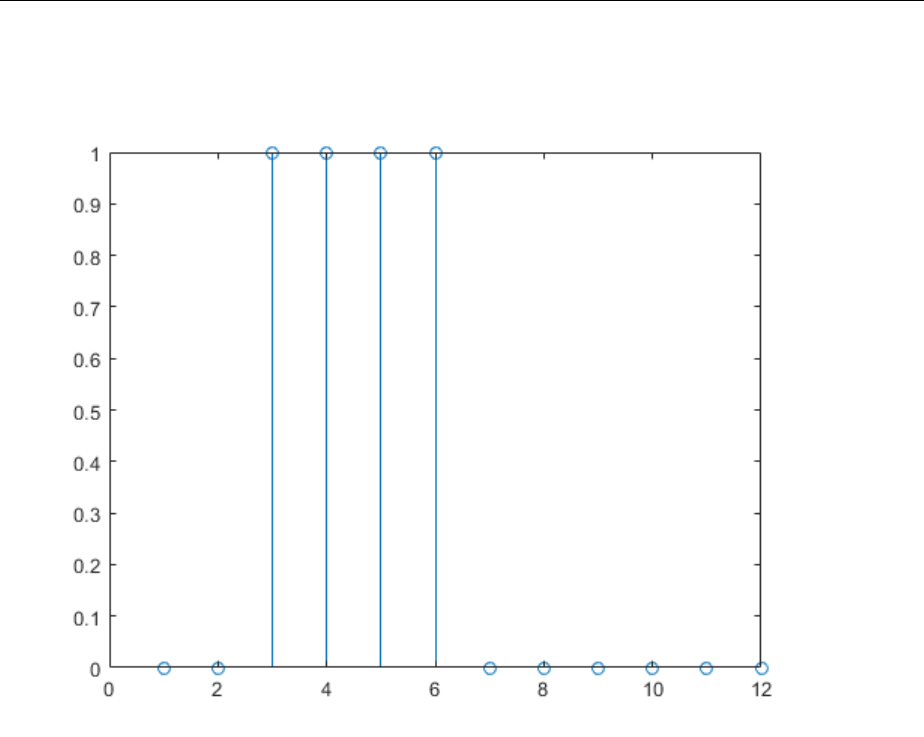

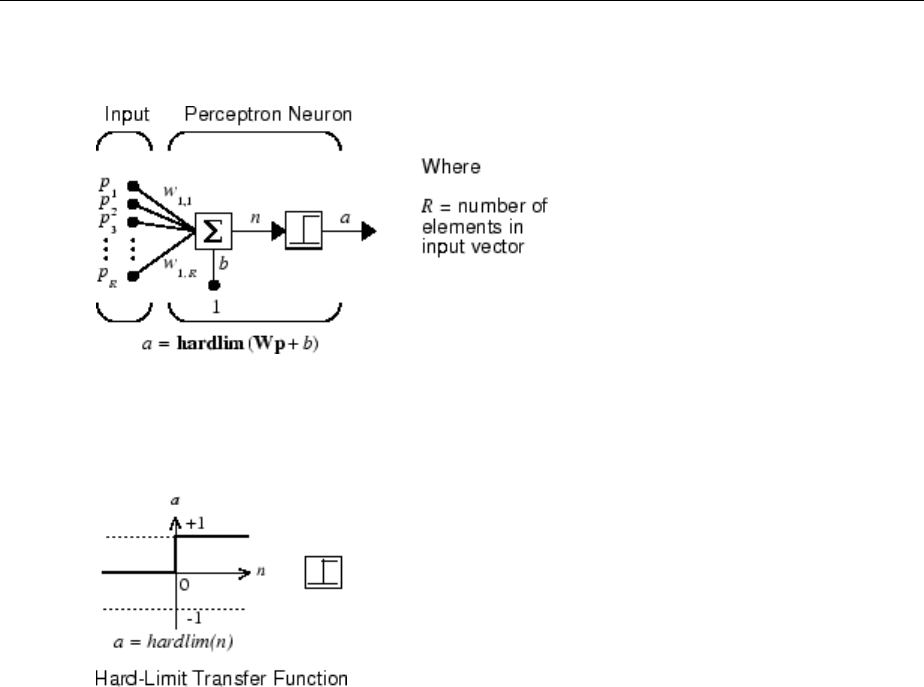

Perceptron Neural Networks .......................... 11-3

Neuron Model ................................... 11-3

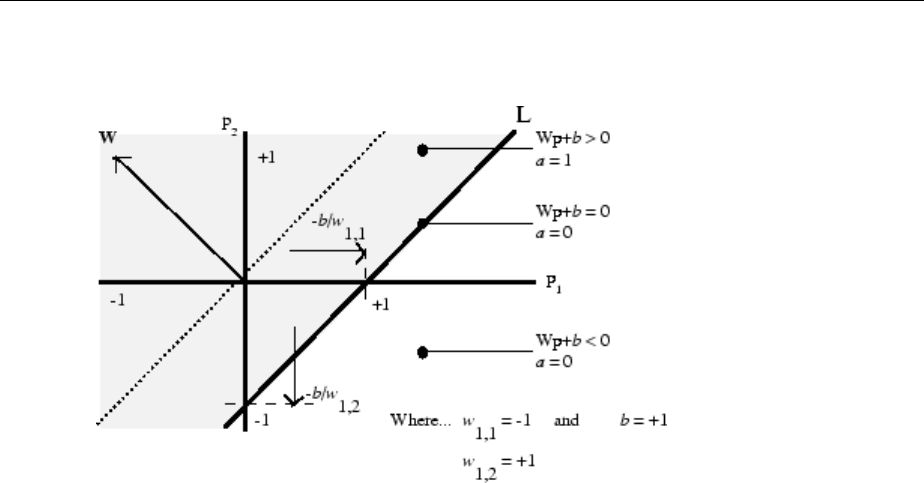

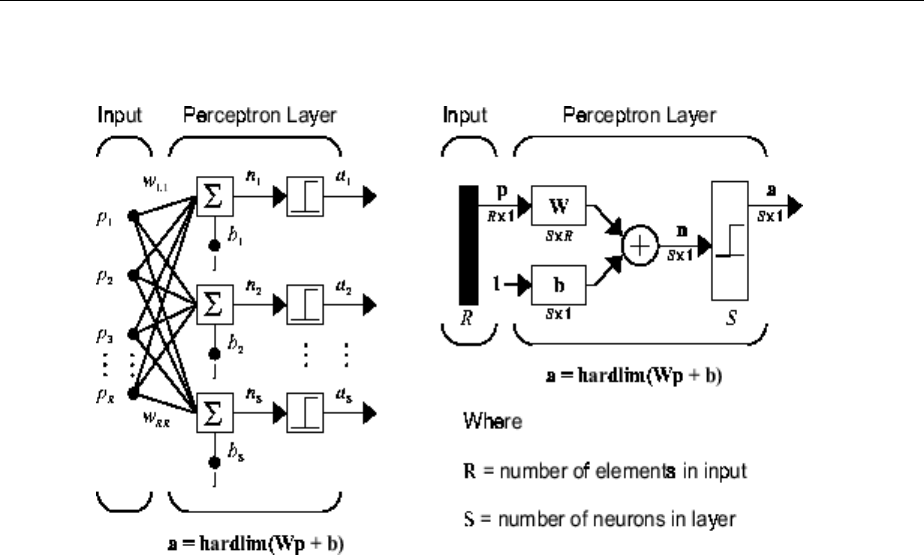

Perceptron Architecture ............................ 11-5

Create a Perceptron ............................... 11-6

Perceptron Learning Rule (learnp) .................... 11-8

Training (train) .................................. 11-10

Limitations and Cautions .......................... 11-15

Linear Neural Networks ............................. 11-18

Neuron Model .................................. 11-18

Network Architecture ............................. 11-19

Least Mean Square Error .......................... 11-22

Linear System Design (newlind) ..................... 11-23

Linear Networks with Delays ....................... 11-24

LMS Algorithm (learnwh) .......................... 11-26

Linear Classication (train) ........................ 11-28

Limitations and Cautions .......................... 11-30

Neural Network Object Reference

12

Neural Network Object Properties ...................... 12-2

General ........................................ 12-2

Architecture ..................................... 12-2

Subobject Structures .............................. 12-6

Functions ....................................... 12-8

Weight and Bias Values ............................ 12-12

Neural Network Subobject Properties .................. 12-14

Inputs ........................................ 12-14

Layers ........................................ 12-16

Outputs ....................................... 12-22

Biases ........................................ 12-24

Input Weights ................................... 12-25

Layer Weights .................................. 12-27

xv

Bibliography

13

Neural Network Toolbox Bibliography ................... 13-2

Mathematical Notation

A

Mathematics and Code Equivalents ...................... A-2

Mathematics Notation to MATLAB Notation .............. A-2

Figure Notation ................................... A-2

Neural Network Blocks for the Simulink Environment

B

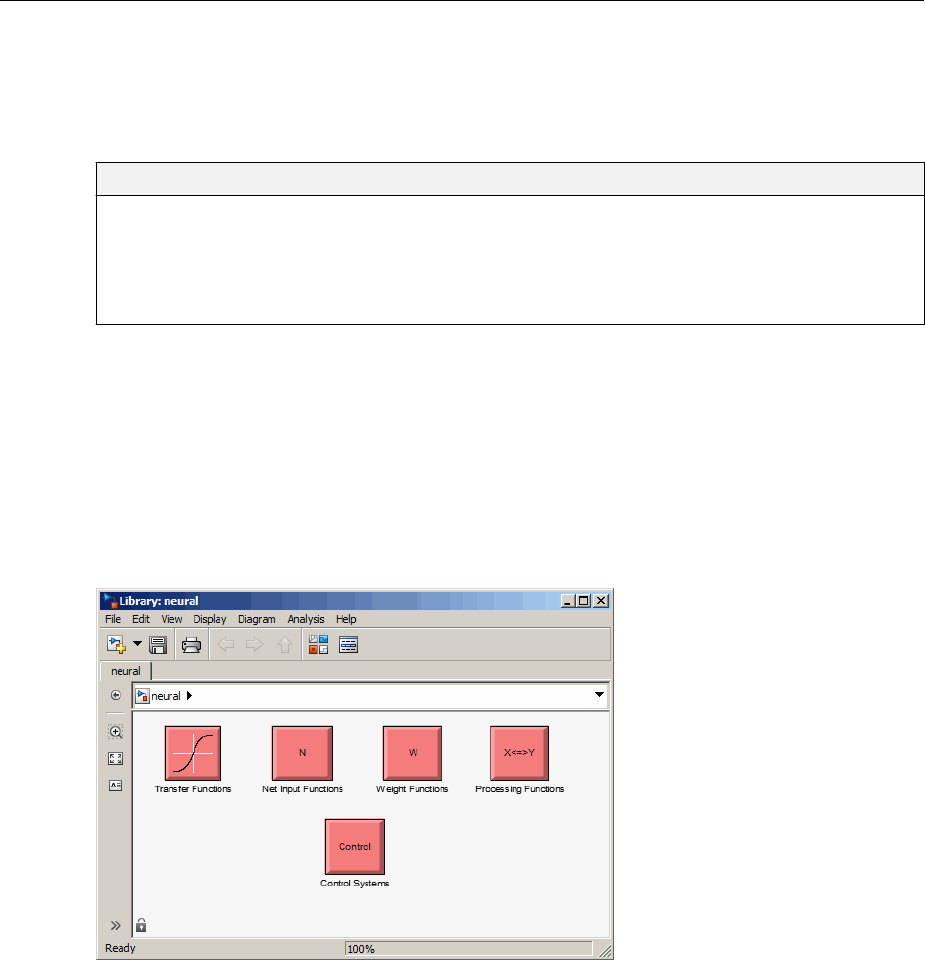

Neural Network Simulink Block Library .................. B-2

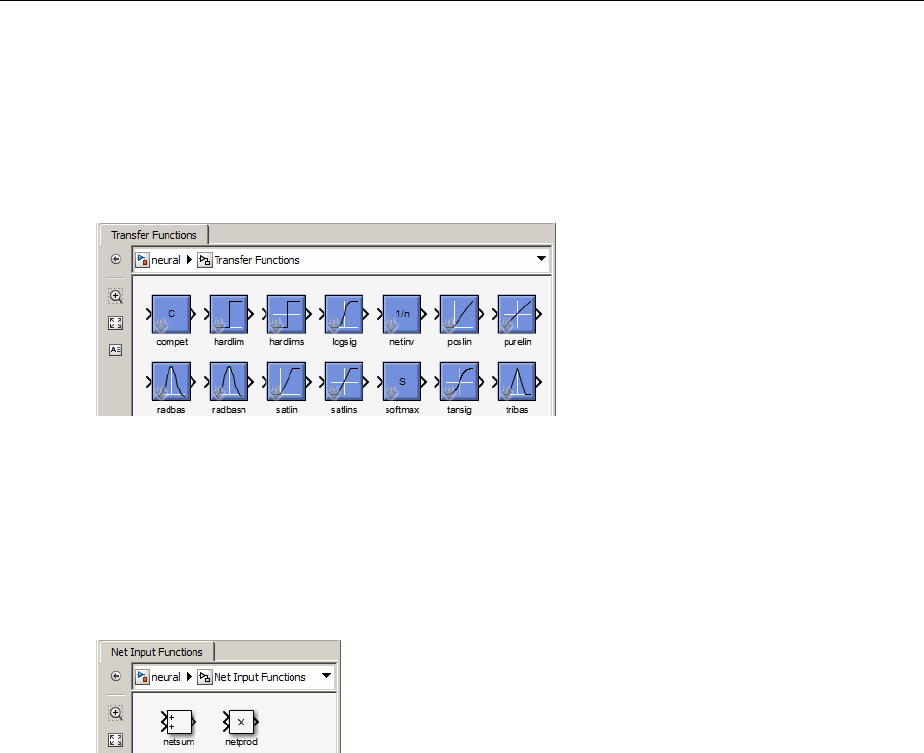

Transfer Function Blocks ............................ B-3

Net Input Blocks .................................. B-3

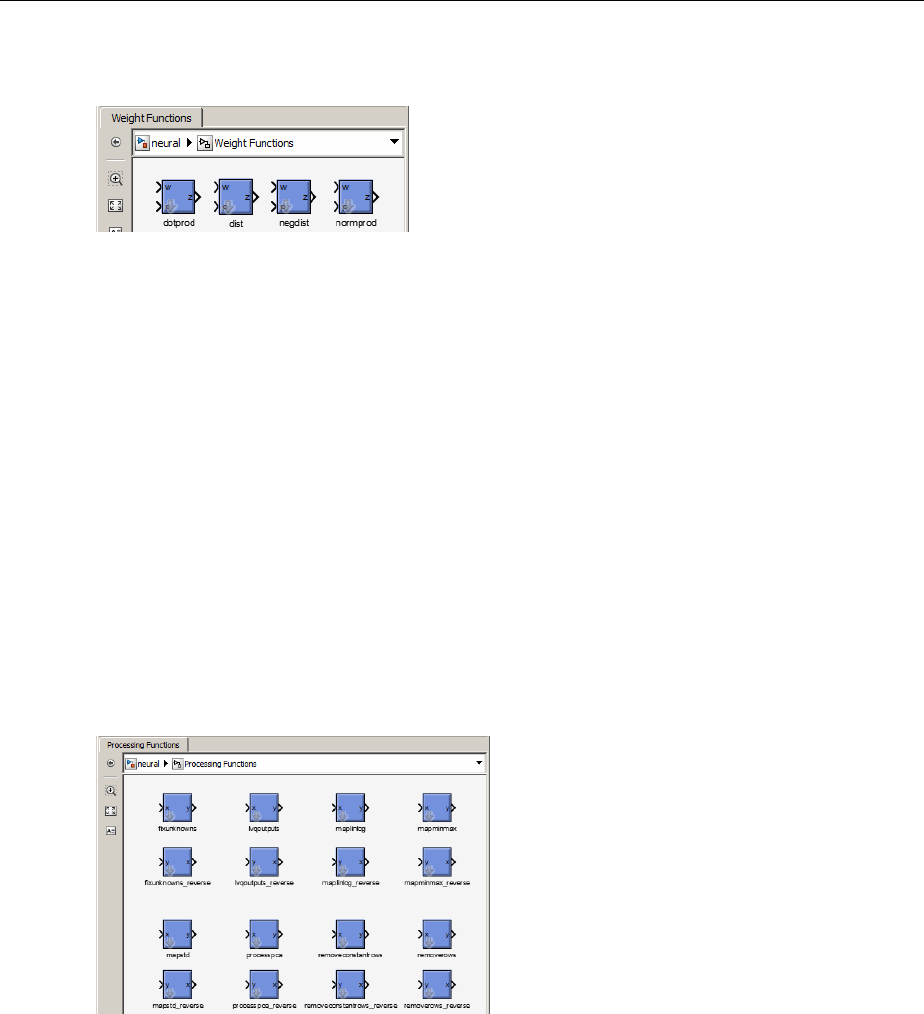

Weight Blocks .................................... B-3

Processing Blocks ................................. B-4

Deploy Neural Network Simulink Diagrams ............... B-5

Example ......................................... B-5

Suggested Exercises ............................... B-7

Generate Functions and Objects ....................... B-8

Code Notes

C

Neural Network Toolbox Data Conventions ................ C-2

Dimensions ...................................... C-2

Variables ........................................ C-2

xvi Contents

Neural Network Toolbox Design

Book

The developers of the Neural Network Toolbox software have written a textbook, Neural

Network Design (Hagan, Demuth, and Beale, ISBN 0-9717321-0-8). The book presents the

theory of neural networks, discusses their design and application, and makes

considerable use of the MATLAB® environment and Neural Network Toolbox software.

Example programs from the book are used in various sections of this documentation. (You

can nd all the book example programs in the Neural Network Toolbox software by typing

nnd.)

Obtain this book from John Stovall at (303) 492-3648, or by email at

John.Stovall@colorado.edu.

The Neural Network Design textbook includes:

• An Instructor's Manual for those who adopt the book for a class

• Transparency Masters for class use

If you are teaching a class and want an Instructor's Manual (with solutions to the book

exercises), contact John Stovall at (303) 492-3648, or by email at

John.Stovall@colorado.edu

To look at sample chapters of the book and to obtain Transparency Masters, go directly to

the Neural Network Design page at:

http://hagan.okstate.edu/nnd.html

From this link, you can obtain sample book chapters in PDF format and you can download

the Transparency Masters by clicking Transparency Masters (3.6MB).

You can get the Transparency Masters in PowerPoint or PDF format.

xvii

Neural Network Objects, Data, and

Training Styles

•“Workow for Neural Network Design” on page 1-2

• “Four Levels of Neural Network Design” on page 1-4

• “Neuron Model” on page 1-5

• “Neural Network Architectures” on page 1-11

• “Create Neural Network Object” on page 1-17

•“Congure Neural Network Inputs and Outputs” on page 1-21

• “Understanding Neural Network Toolbox Data Structures” on page 1-23

• “Neural Network Training Concepts” on page 1-28

1

Workow for Neural Network Design

The work ow for the neural network design process has seven primary steps. Referenced

topics discuss the basic ideas behind steps 2, 3, and 5.

1Collect data

2Create the network — “Create Neural Network Object” on page 1-17

3Congure the network — “Congure Neural Network Inputs and Outputs” on page 1-

21

4Initialize the weights and biases

5Train the network — “Neural Network Training Concepts” on page 1-28

6Validate the network

7Use the network

Data collection in step 1 generally occurs outside the framework of Neural Network

Toolbox software, but it is discussed in general terms in “Multilayer Neural Networks and

Backpropagation Training” on page 4-2. Details of the other steps and discussions of

steps 4, 6, and 7, are discussed in topics specic to the type of network.

The Neural Network Toolbox software uses the network object to store all of the

information that denes a neural network. This topic describes the basic components of a

neural network and shows how they are created and stored in the network object.

After a neural network has been created, it needs to be congured and then trained.

Conguration involves arranging the network so that it is compatible with the problem

you want to solve, as dened by sample data. After the network has been congured, the

adjustable network parameters (called weights and biases) need to be tuned, so that the

network performance is optimized. This tuning process is referred to as training the

network. Conguration and training require that the network be provided with example

data. This topic shows how to format the data for presentation to the network. It also

explains network conguration and the two forms of network training: incremental

training and batch training.

1Neural Network Objects, Data, and Training Styles

1-2

Four Levels of Neural Network Design

There are four dierent levels at which the Neural Network Toolbox software can be

used. The rst level is represented by the GUIs that are described in “Getting Started

with Neural Network Toolbox”. These provide a quick way to access the power of the

toolbox for many problems of function tting, pattern recognition, clustering and time

series analysis.

The second level of toolbox use is through basic command-line operations. The command-

line functions use simple argument lists with intelligent default settings for function

parameters. (You can override all of the default settings, for increased functionality.) This

topic, and the ones that follow, concentrate on command-line operations.

The GUIs described in Getting Started can automatically generate MATLAB code les

with the command-line implementation of the GUI operations. This provides a nice

introduction to the use of the command-line functionality.

A third level of toolbox use is customization of the toolbox. This advanced capability

allows you to create your own custom neural networks, while still having access to the full

functionality of the toolbox.

The fourth level of toolbox usage is the ability to modify any of the code les contained in

the toolbox. Every computational component is written in MATLAB code and is fully

accessible.

The rst level of toolbox use (through the GUIs) is described in Getting Started which also

introduces command-line operations. The following topics will discuss the command-line

operations in more detail. The customization of the toolbox is described in “Dene

Shallow Neural Network Architectures”.

See Also

More About

•“Workow for Neural Network Design” on page 1-2

1Neural Network Objects, Data, and Training Styles

1-4

Neuron Model

In this section...

“Simple Neuron” on page 1-5

“Transfer Functions” on page 1-6

“Neuron with Vector Input” on page 1-7

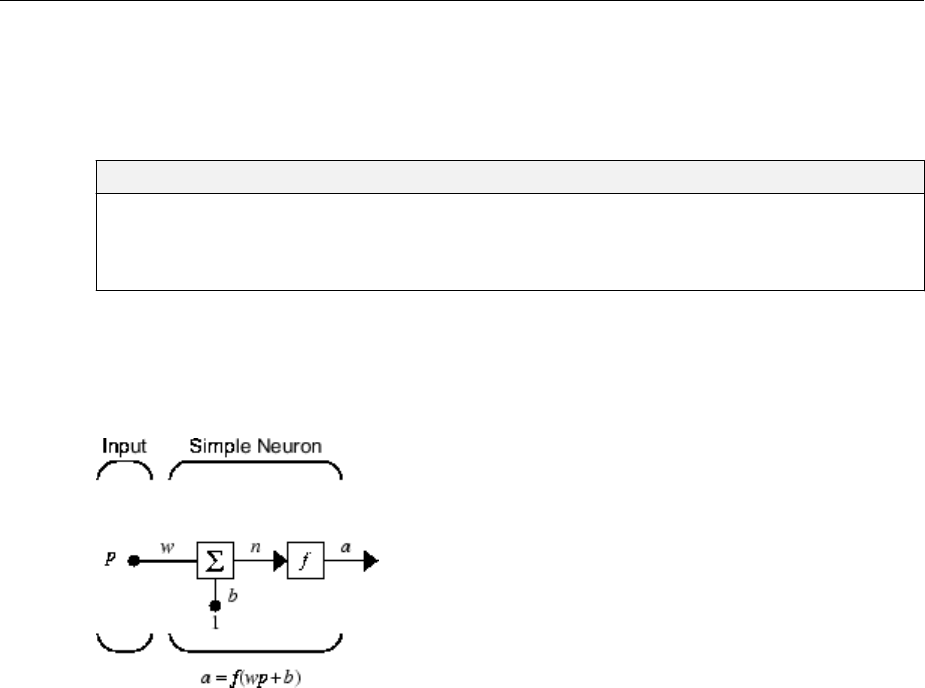

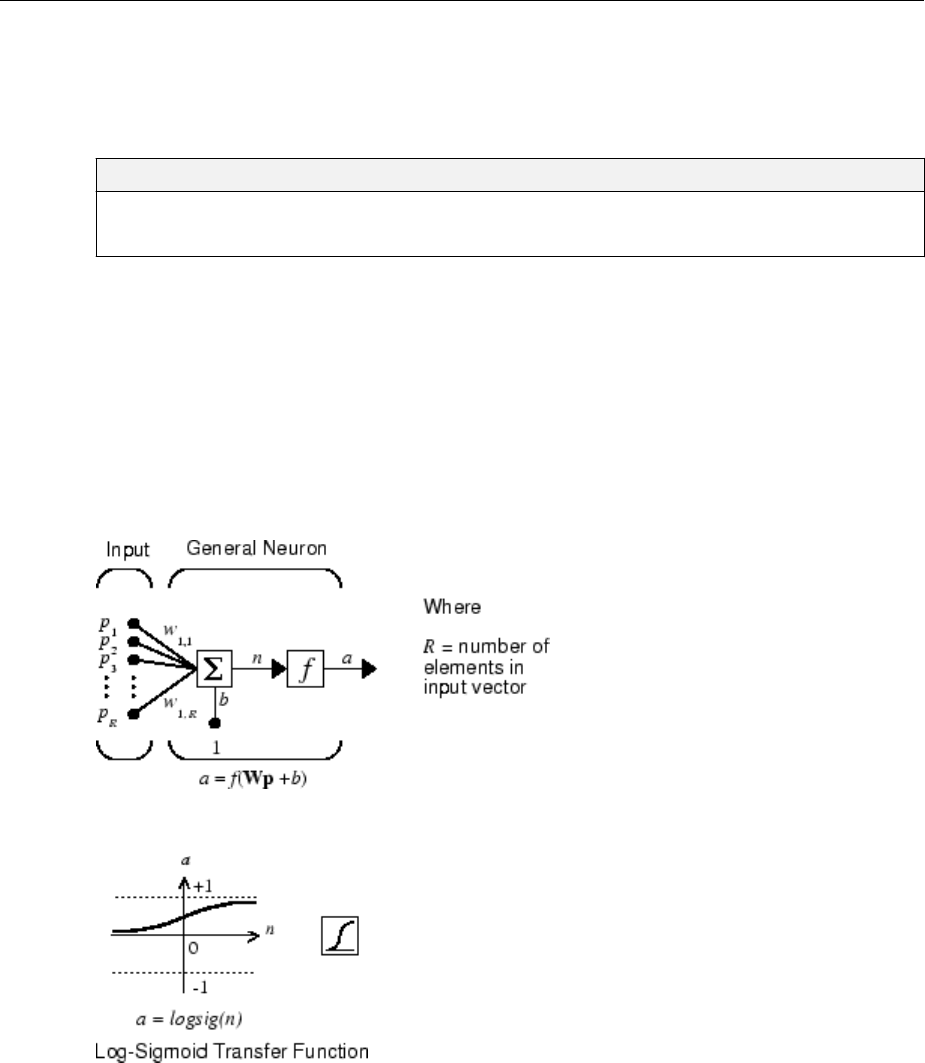

Simple Neuron

The fundamental building block for neural networks is the single-input neuron, such as

this example.

There are three distinct functional operations that take place in this example neuron.

First, the scalar input p is multiplied by the scalar weight w to form the product wp, again

a scalar. Second, the weighted input wp is added to the scalar bias b to form the net input

n. (In this case, you can view the bias as shifting the function f to the left by an amount b.

The bias is much like a weight, except that it has a constant input of 1.) Finally, the net

input is passed through the transfer function f, which produces the scalar output a. The

names given to these three processes are: the weight function, the net input function and

the transfer function.

For many types of neural networks, the weight function is a product of a weight times the

input, but other weight functions (e.g., the distance between the weight and the input, |w

− p|) are sometimes used. (For a list of weight functions, type help nnweight.) The

most common net input function is the summation of the weighted inputs with the bias,

but other operations, such as multiplication, can be used. (For a list of net input functions,

type help nnnetinput.) “Introduction to Radial Basis Neural Networks” on page 7-2

Neuron Model

1-5

discusses how distance can be used as the weight function and multiplication can be used

as the net input function. There are also many types of transfer functions. Examples of

various transfer functions are in “Transfer Functions” on page 1-6. (For a list of

transfer functions, type help nntransfer.)

Note that w and b are both adjustable scalar parameters of the neuron. The central idea

of neural networks is that such parameters can be adjusted so that the network exhibits

some desired or interesting behavior. Thus, you can train the network to do a particular

job by adjusting the weight or bias parameters.

All the neurons in the Neural Network Toolbox software have provision for a bias, and a

bias is used in many of the examples and is assumed in most of this toolbox. However, you

can omit a bias in a neuron if you want.

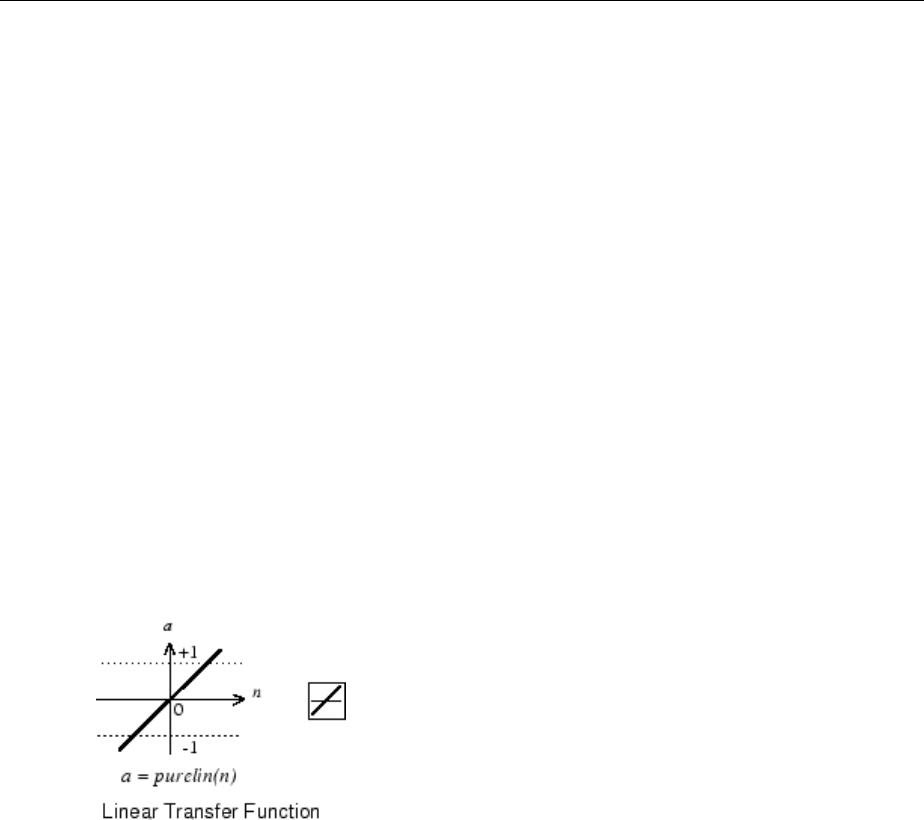

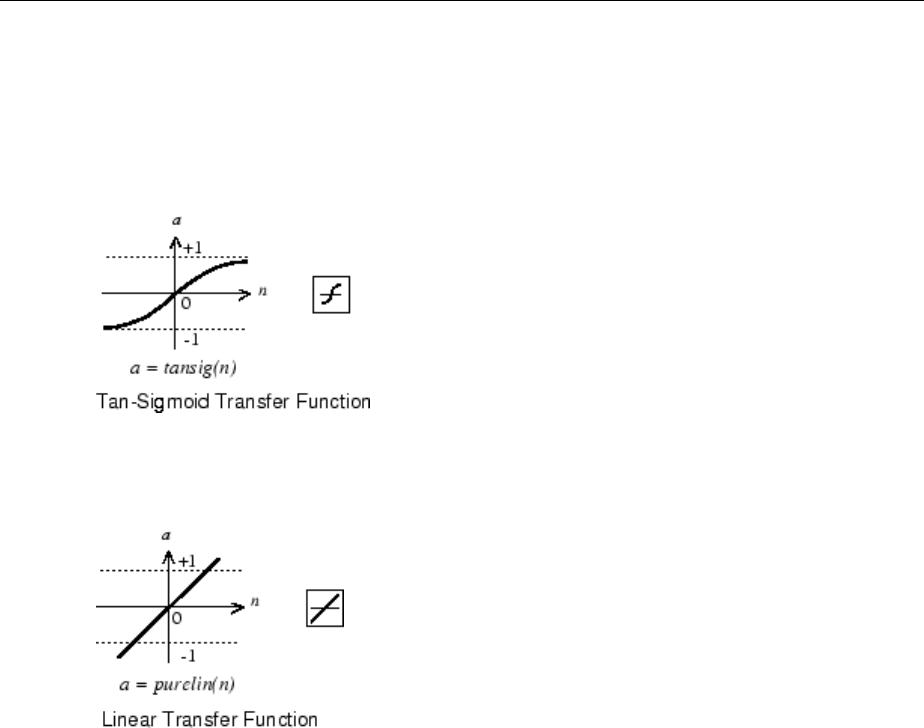

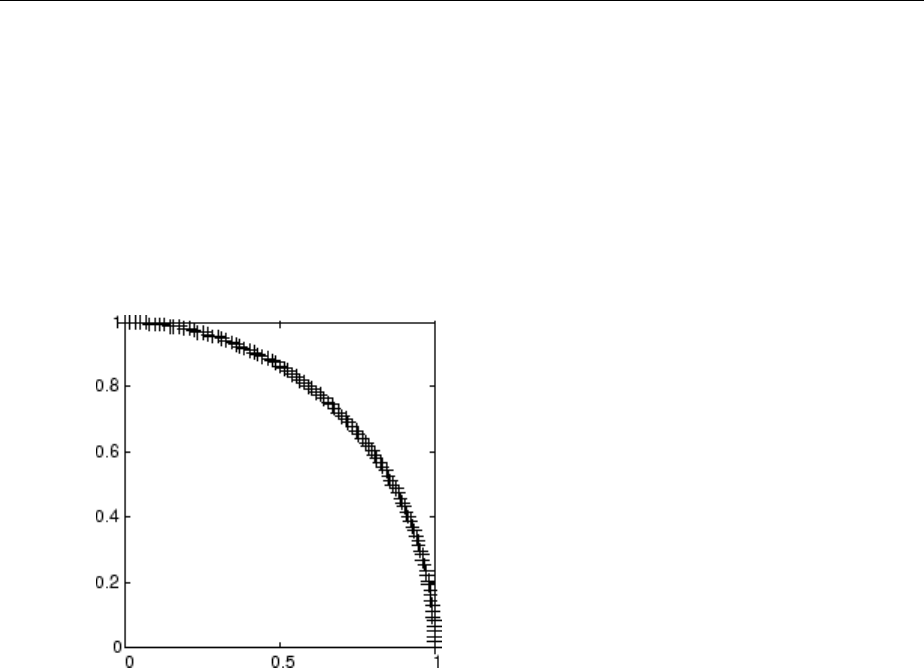

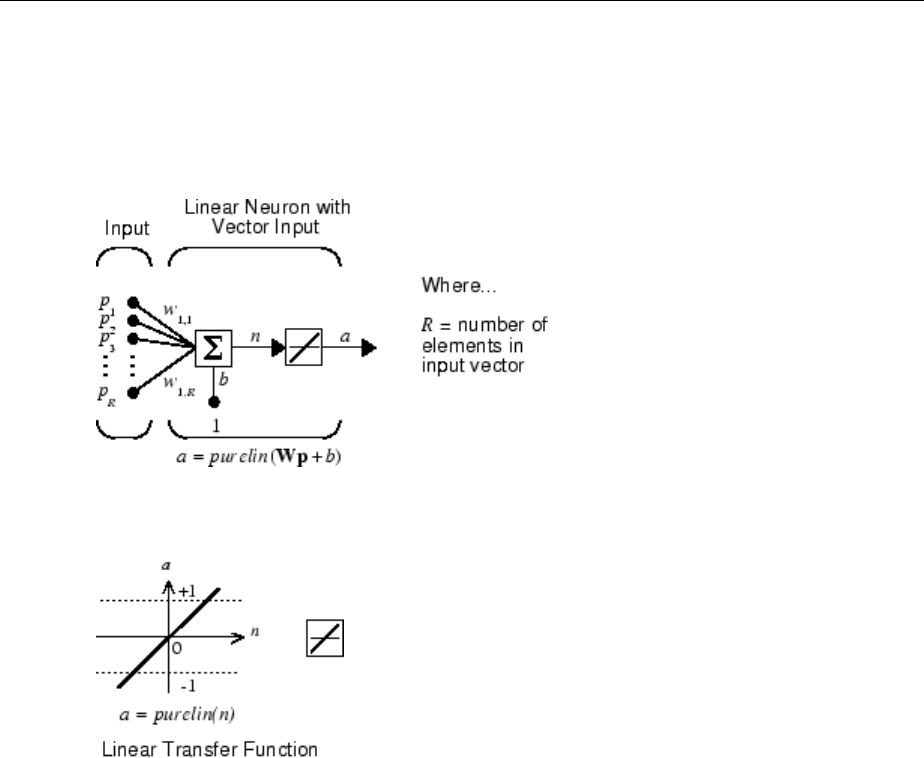

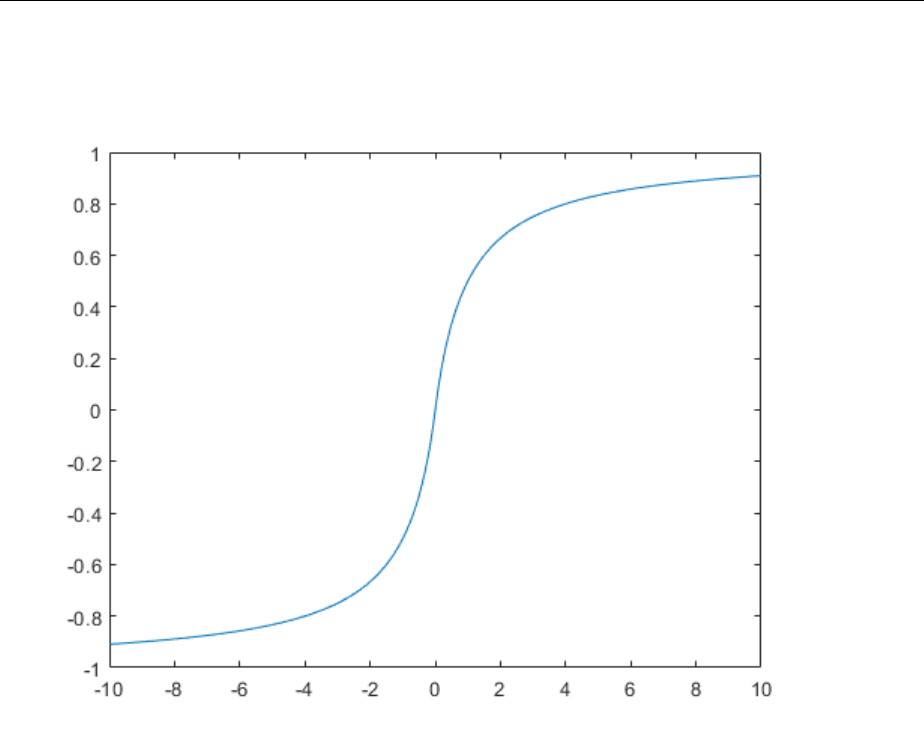

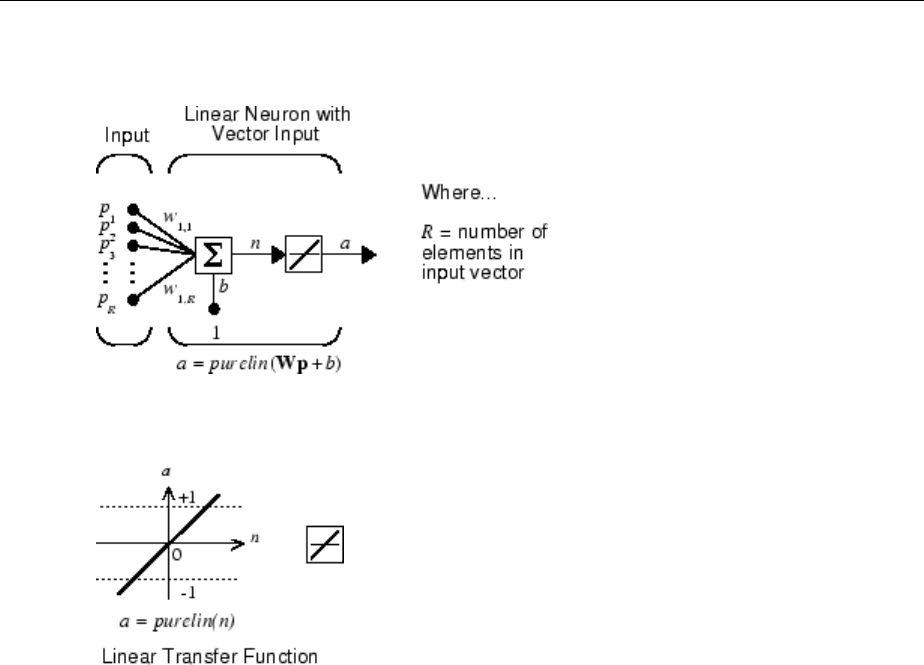

Transfer Functions

Many transfer functions are included in the Neural Network Toolbox software.

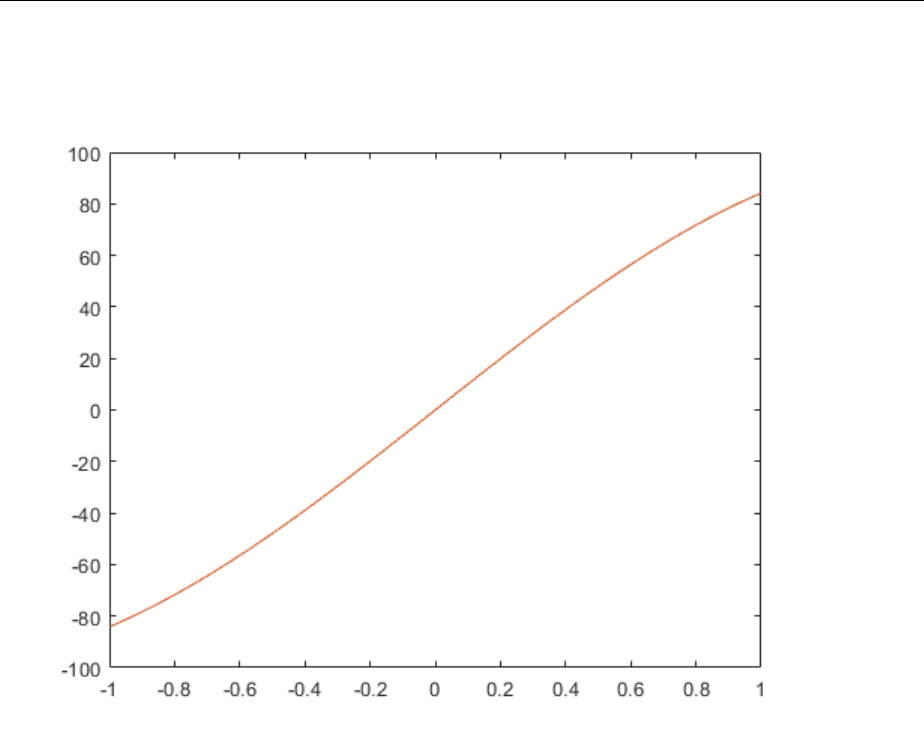

Two of the most commonly used functions are shown below.

The following gure illustrates the linear transfer function.

Neurons of this type are used in the nal layer of multilayer networks that are used as

function approximators. This is shown in “Multilayer Neural Networks and

Backpropagation Training” on page 4-2.

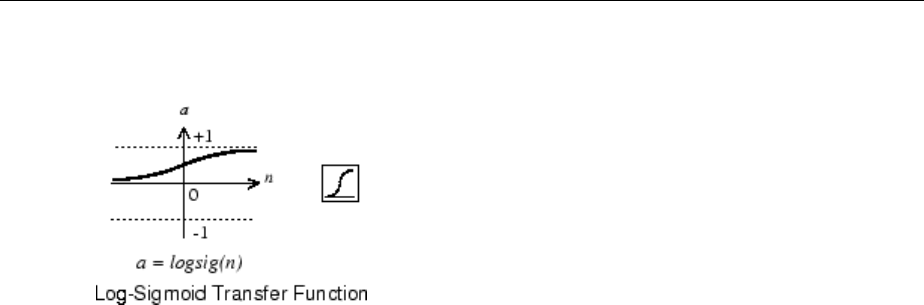

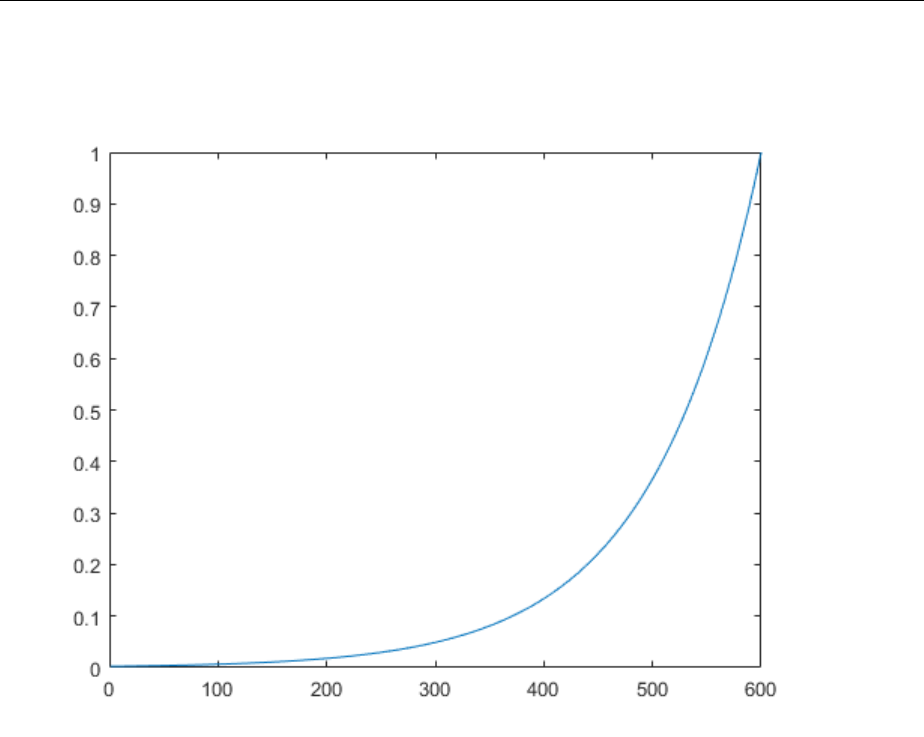

The sigmoid transfer function shown below takes the input, which can have any value

between plus and minus innity, and squashes the output into the range 0 to 1.

1Neural Network Objects, Data, and Training Styles

1-6

This transfer function is commonly used in the hidden layers of multilayer networks, in

part because it is dierentiable.

The symbol in the square to the right of each transfer function graph shown above

represents the associated transfer function. These icons replace the general f in the

network diagram blocks to show the particular transfer function being used.

For a complete list of transfer functions, type help nntransfer. You can also specify

your own transfer functions.

You can experiment with a simple neuron and various transfer functions by running the

example program nnd2n1.

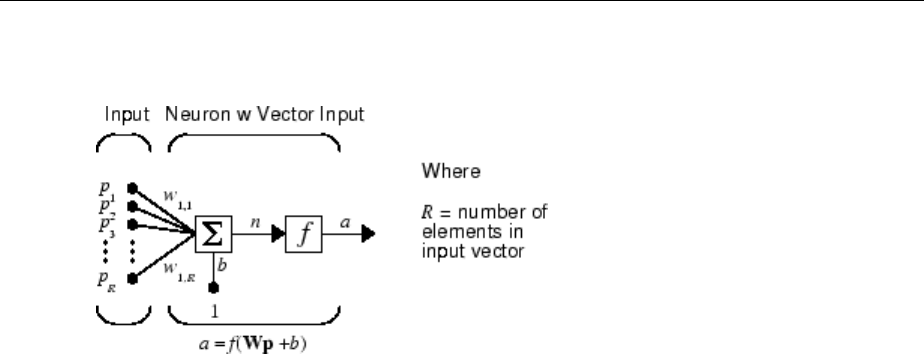

Neuron with Vector Input

The simple neuron can be extended to handle inputs that are vectors. A neuron with a

single R-element input vector is shown below. Here the individual input elements

p p pR1 2

,,…

are multiplied by weights

w w w R1 1 1 2 1, , ,

, ,…

and the weighted values are fed to the summing junction. Their sum is simply Wp, the dot

product of the (single row) matrix W and the vector p. (There are other weight functions,

in addition to the dot product, such as the distance between the row of the weight matrix

and the input vector, as in “Introduction to Radial Basis Neural Networks” on page 7-

2.)

Neuron Model

1-7

The neuron has a bias b, which is summed with the weighted inputs to form the net input

n. (In addition to the summation, other net input functions can be used, such as the

multiplication that is used in “Introduction to Radial Basis Neural Networks” on page 7-

2.) The net input n is the argument of the transfer function f.

n w p w p w p b

R R

= + + + +

1 1 1 1 2 2 1, , ,

…

This expression can, of course, be written in MATLAB code as

n = W*p + b

However, you will seldom be writing code at this level, for such code is already built into

functions to dene and simulate entire networks.

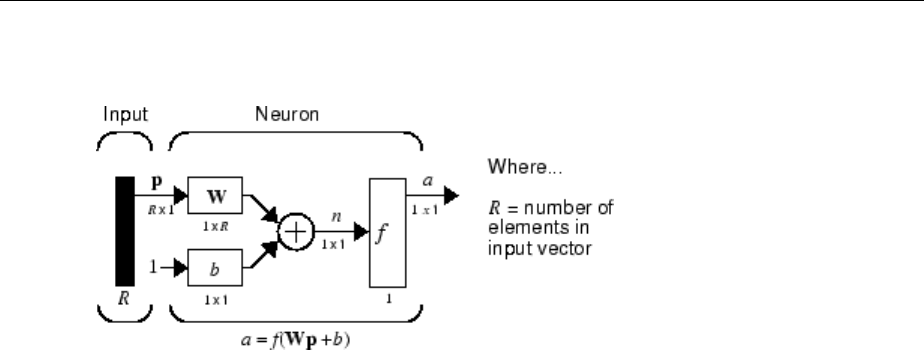

Abbreviated Notation

The gure of a single neuron shown above contains a lot of detail. When you consider

networks with many neurons, and perhaps layers of many neurons, there is so much

detail that the main thoughts tend to be lost. Thus, the authors have devised an

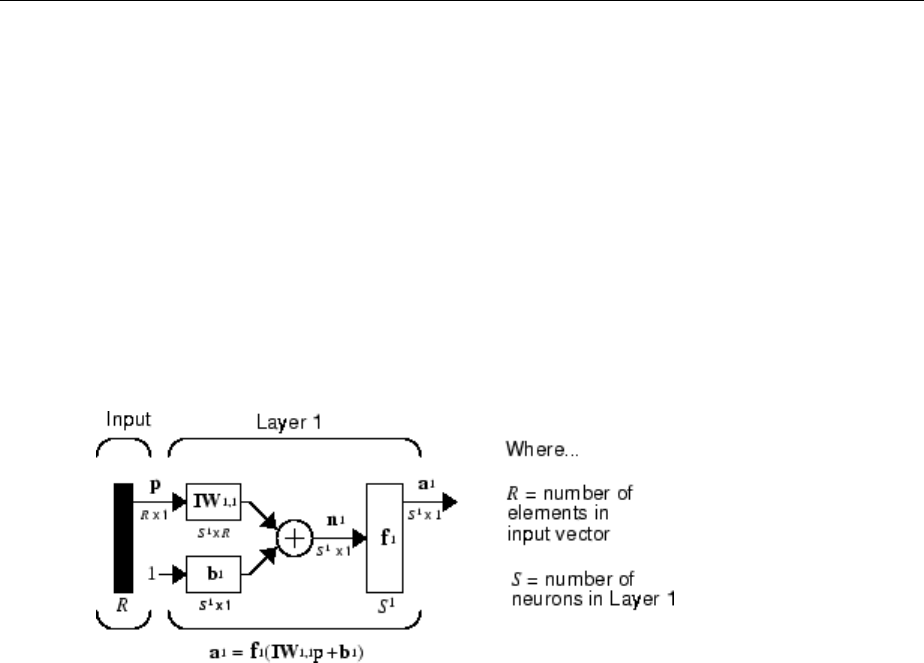

abbreviated notation for an individual neuron. This notation, which is used later in

circuits of multiple neurons, is shown here.

1Neural Network Objects, Data, and Training Styles

1-8

Here the input vector p is represented by the solid dark vertical bar at the left. The

dimensions of p are shown below the symbol p in the gure as R × 1. (Note that a capital

letter, such as R in the previous sentence, is used when referring to the size of a vector.)

Thus, p is a vector of R input elements. These inputs postmultiply the single-row, R-

column matrix W. As before, a constant 1 enters the neuron as an input and is multiplied

by a scalar bias b. The net input to the transfer function f is n, the sum of the bias b and

the product Wp. This sum is passed to the transfer function f to get the neuron's output a,

which in this case is a scalar. Note that if there were more than one neuron, the network

output would be a vector.

A layer of a network is dened in the previous gure. A layer includes the weights, the

multiplication and summing operations (here realized as a vector product Wp), the bias b,

and the transfer function f. The array of inputs, vector p, is not included in or called a

layer.

As with the “Simple Neuron” on page 1-5, there are three operations that take place in

the layer: the weight function (matrix multiplication, or dot product, in this case), the net

input function (summation, in this case), and the transfer function.

Each time this abbreviated network notation is used, the sizes of the matrices are shown

just below their matrix variable names. This notation will allow you to understand the

architectures and follow the matrix mathematics associated with them.

As discussed in “Transfer Functions” on page 1-6, when a specic transfer function is to

be used in a gure, the symbol for that transfer function replaces the f shown above. Here

are some examples.

Neuron Model

1-9

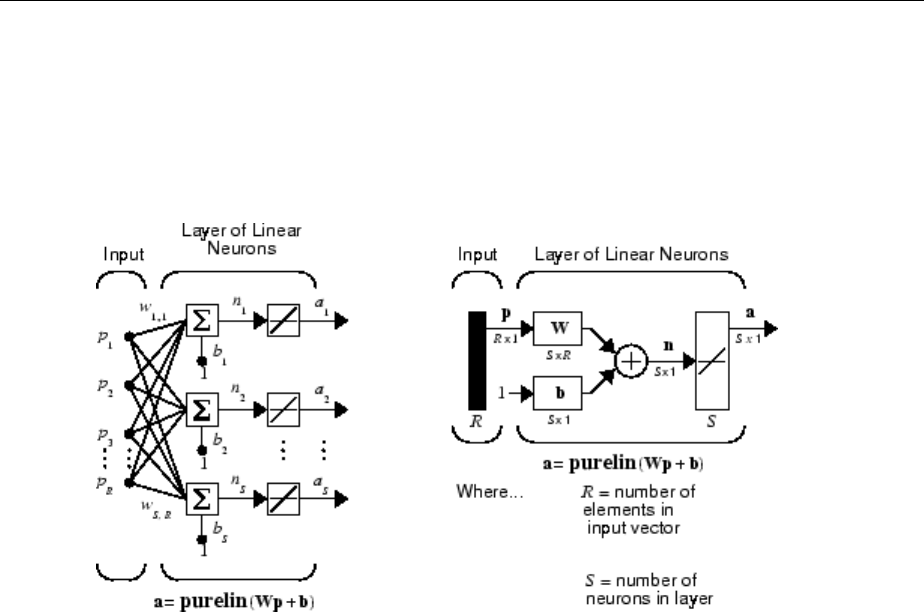

Neural Network Architectures

In this section...

“One Layer of Neurons” on page 1-11

“Multiple Layers of Neurons” on page 1-13

“Input and Output Processing Functions” on page 1-15

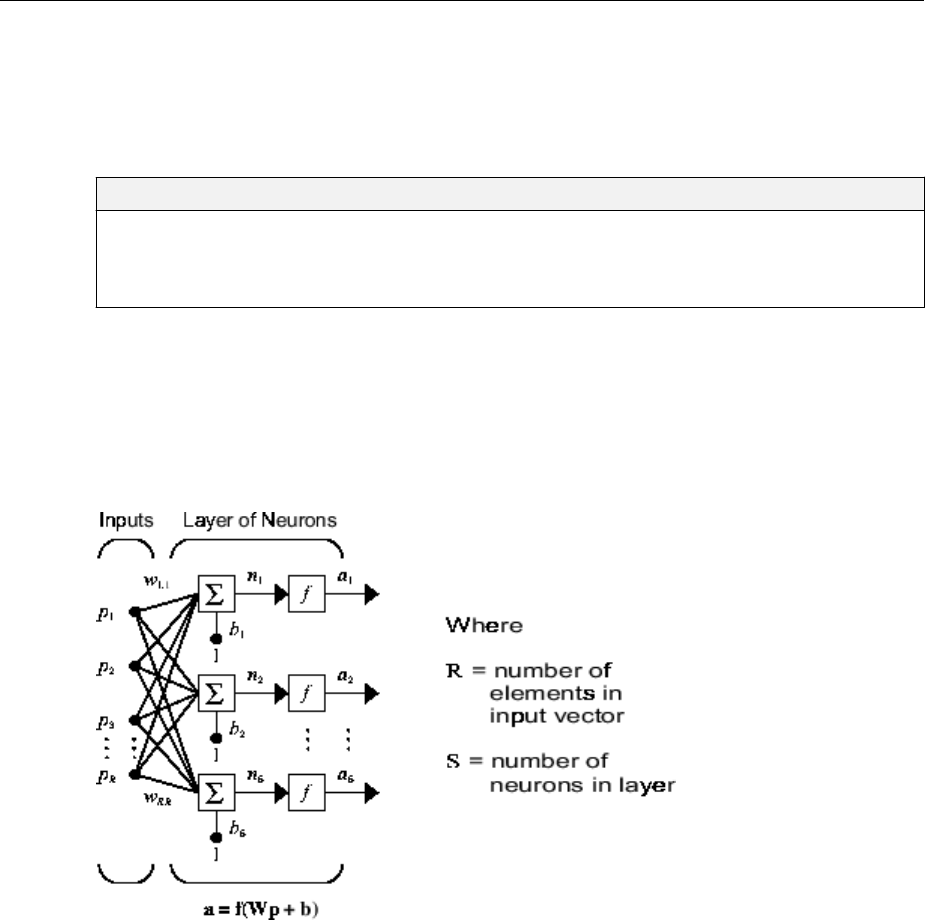

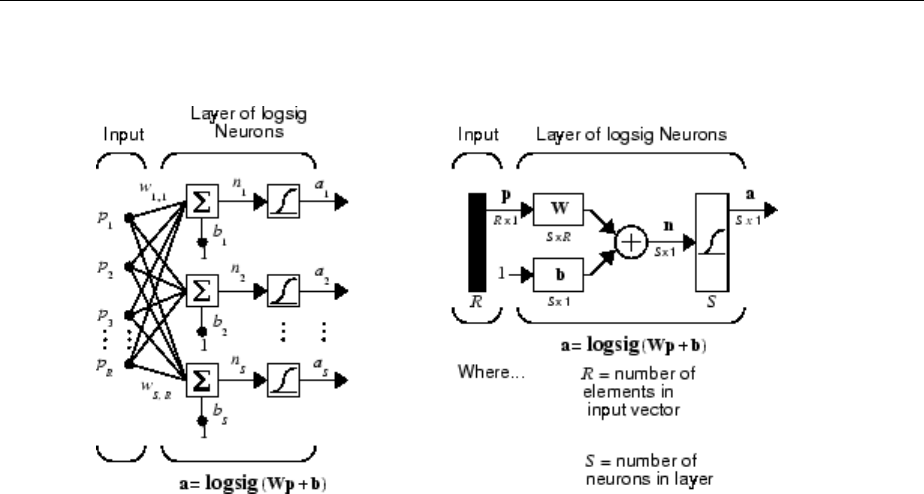

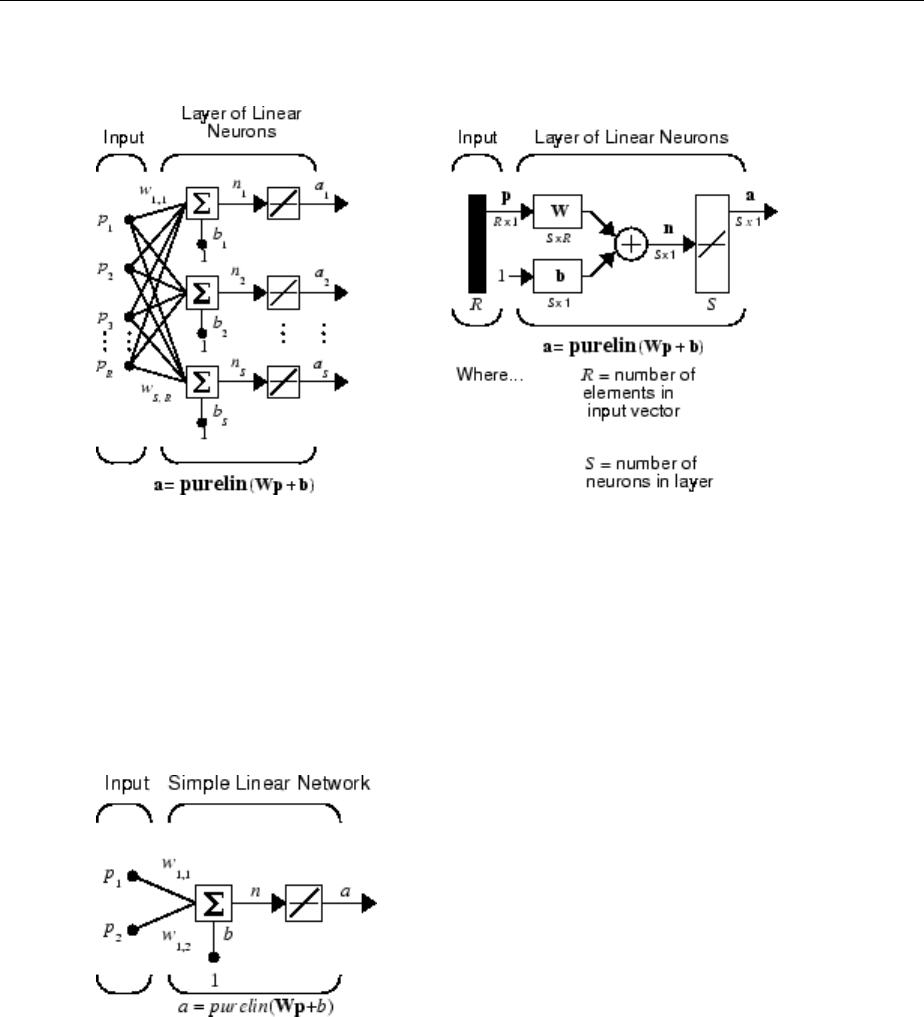

Two or more of the neurons shown earlier can be combined in a layer, and a particular

network could contain one or more such layers. First consider a single layer of neurons.

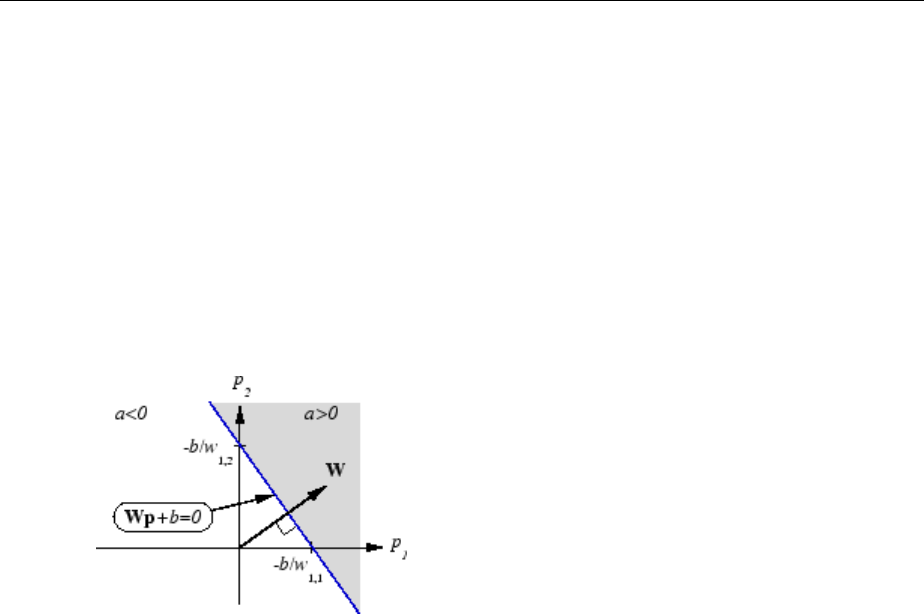

One Layer of Neurons

A one-layer network with R input elements and S neurons follows.

In this network, each element of the input vector p is connected to each neuron input

through the weight matrix W. The ith neuron has a summer that gathers its weighted

inputs and bias to form its own scalar output n(i). The various n(i) taken together form an

S-element net input vector n. Finally, the neuron layer outputs form a column vector a.

The expression for a is shown at the bottom of the gure.

Neural Network Architectures

1-11

Note that it is common for the number of inputs to a layer to be dierent from the number

of neurons (i.e., R is not necessarily equal to S). A layer is not constrained to have the

number of its inputs equal to the number of its neurons.

You can create a single (composite) layer of neurons having dierent transfer functions

simply by putting two of the networks shown earlier in parallel. Both networks would

have the same inputs, and each network would create some of the outputs.

The input vector elements enter the network through the weight matrix W.

W=

È

Î

Í

Í

Í

Í

Í

˘

˚

˙

˙

˙

˙

˙

w w w

w w w

w w w

R

R

S S S R

1 1 1 2 1

2 1 2 2 2

1 2

, , ,

, , ,

, , ,

…

…

…

Note that the row indices on the elements of matrix W indicate the destination neuron of

the weight, and the column indices indicate which source is the input for that weight.

Thus, the indices in w1,2 say that the strength of the signal from the second input element

to the rst (and only) neuron is w1,2.

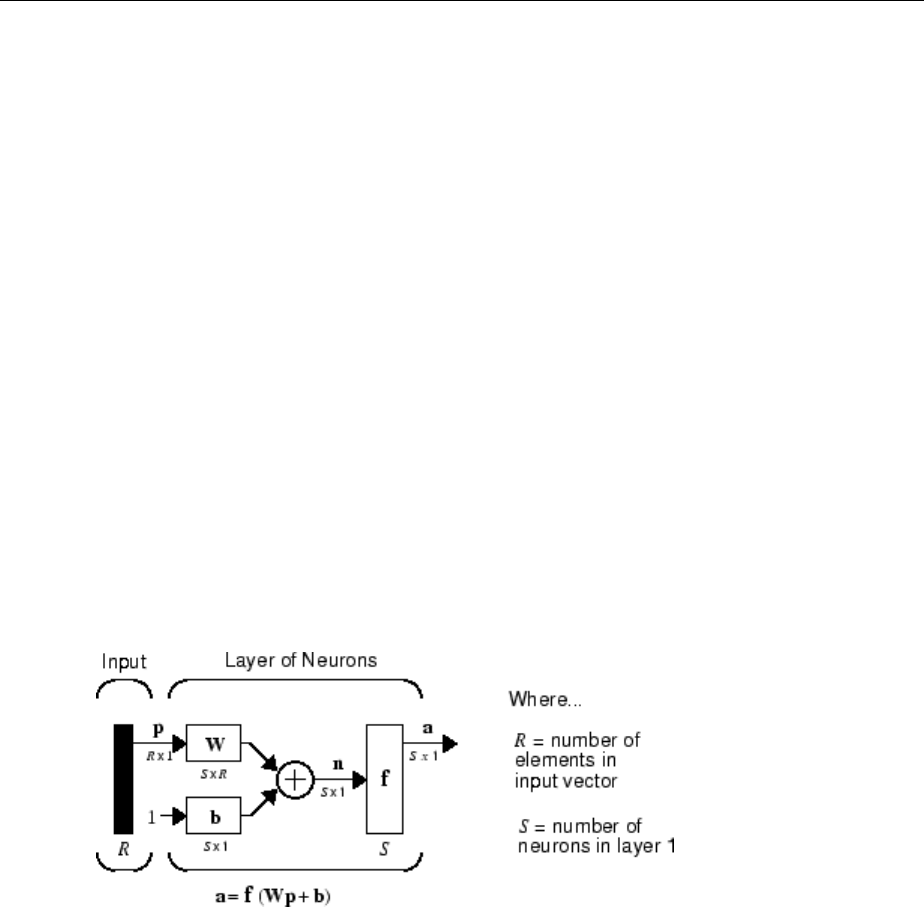

The S neuron R-input one-layer network also can be drawn in abbreviated notation.

Here p is an R-length input vector, W is an S × R matrix, a and b are S-length vectors. As

dened previously, the neuron layer includes the weight matrix, the multiplication

operations, the bias vector b, the summer, and the transfer function blocks.

1Neural Network Objects, Data, and Training Styles

1-12

Inputs and Layers

To describe networks having multiple layers, the notation must be extended. Specically,

it needs to make a distinction between weight matrices that are connected to inputs and

weight matrices that are connected between layers. It also needs to identify the source

and destination for the weight matrices.

We will call weight matrices connected to inputs input weights; we will call weight

matrices connected to layer outputs layer weights. Further, superscripts are used to

identify the source (second index) and the destination (rst index) for the various weights

and other elements of the network. To illustrate, the one-layer multiple input network

shown earlier is redrawn in abbreviated form here.

As you can see, the weight matrix connected to the input vector p is labeled as an input

weight matrix (IW1,1) having a source 1 (second index) and a destination 1 (rst index).

Elements of layer 1, such as its bias, net input, and output have a superscript 1 to say that

they are associated with the rst layer.

“Multiple Layers of Neurons” on page 1-13 uses layer weight (LW) matrices as well as

input weight (IW) matrices.

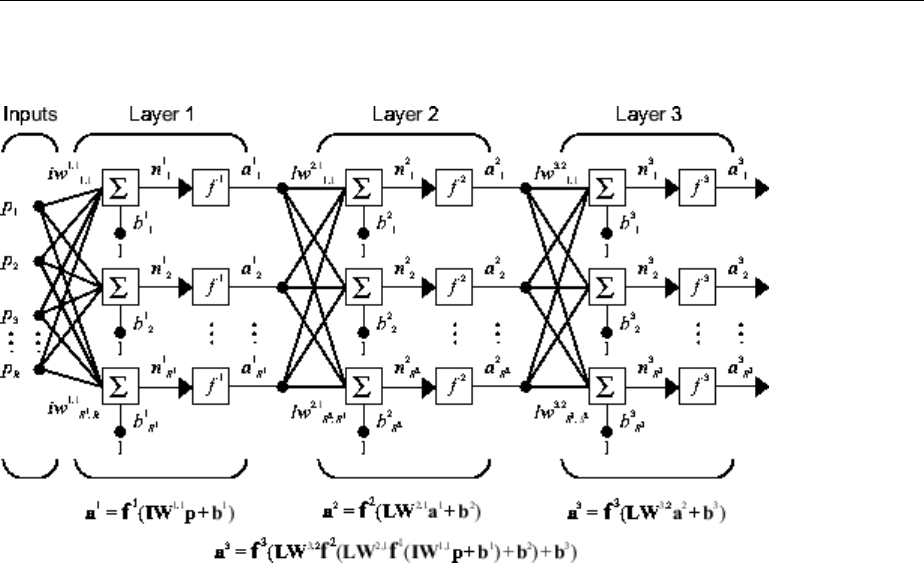

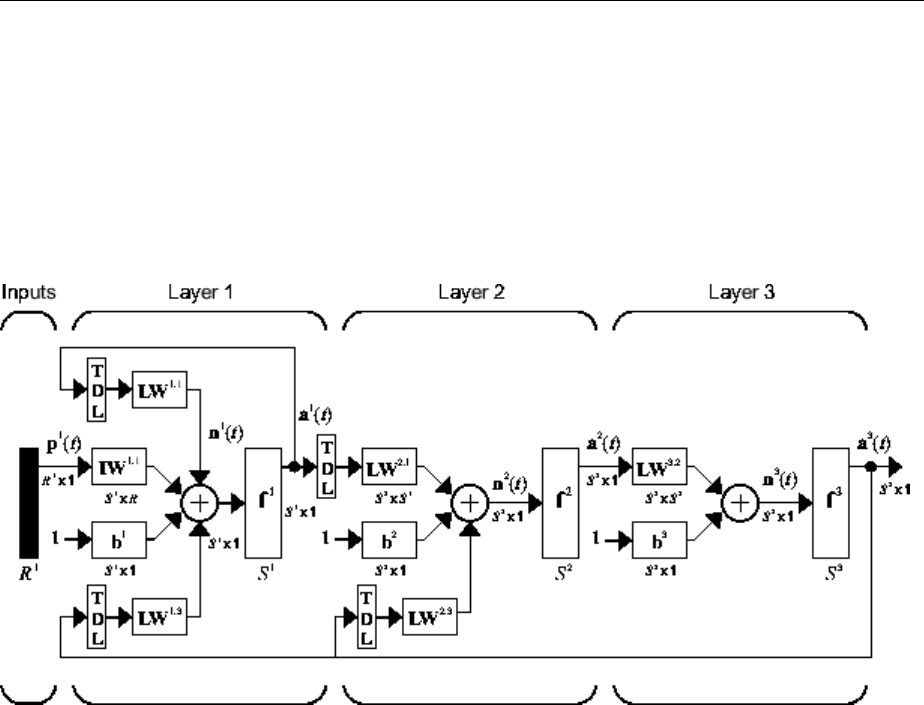

Multiple Layers of Neurons

A network can have several layers. Each layer has a weight matrix W, a bias vector b, and

an output vector a. To distinguish between the weight matrices, output vectors, etc., for

each of these layers in the gures, the number of the layer is appended as a superscript

to the variable of interest. You can see the use of this layer notation in the three-layer

network shown next, and in the equations at the bottom of the gure.

Neural Network Architectures

1-13

The network shown above has R1 inputs, S1 neurons in the rst layer, S2 neurons in the

second layer, etc. It is common for dierent layers to have dierent numbers of neurons.

A constant input 1 is fed to the bias for each neuron.

Note that the outputs of each intermediate layer are the inputs to the following layer.

Thus layer 2 can be analyzed as a one-layer network with S1 inputs, S2 neurons, and an S2

× S1 weight matrix W2. The input to layer 2 is a1; the output is a2. Now that all the

vectors and matrices of layer 2 have been identied, it can be treated as a single-layer

network on its own. This approach can be taken with any layer of the network.

The layers of a multilayer network play dierent roles. A layer that produces the network

output is called an output layer. All other layers are called hidden layers. The three-layer

network shown earlier has one output layer (layer 3) and two hidden layers (layer 1 and

layer 2). Some authors refer to the inputs as a fourth layer. This toolbox does not use that

designation.

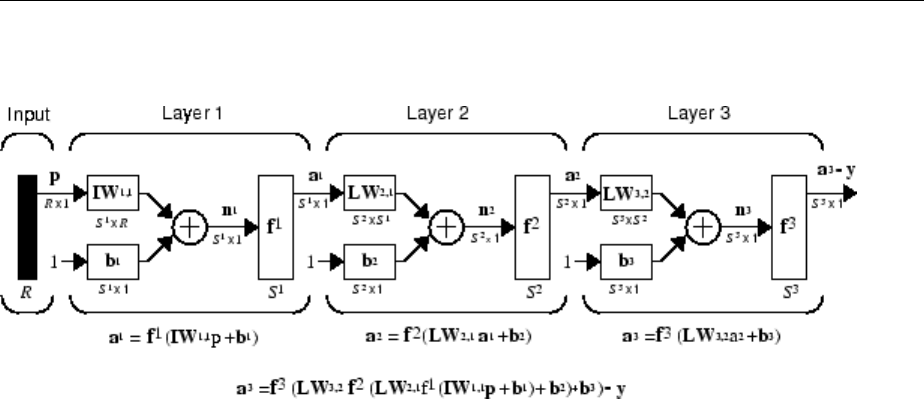

The architecture of a multilayer network with a single input vector can be specied with

the notation R − S1 − S2 −...− SM, where the number of elements of the input vector and

the number of neurons in each layer are specied.

The same three-layer network can also be drawn using abbreviated notation.

1Neural Network Objects, Data, and Training Styles

1-14

Multiple-layer networks are quite powerful. For instance, a network of two layers, where

the rst layer is sigmoid and the second layer is linear, can be trained to approximate any

function (with a nite number of discontinuities) arbitrarily well. This kind of two-layer

network is used extensively in “Multilayer Neural Networks and Backpropagation

Training” on page 4-2.

Here it is assumed that the output of the third layer, a3, is the network output of interest,

and this output is labeled as y. This notation is used to specify the output of multilayer

networks.

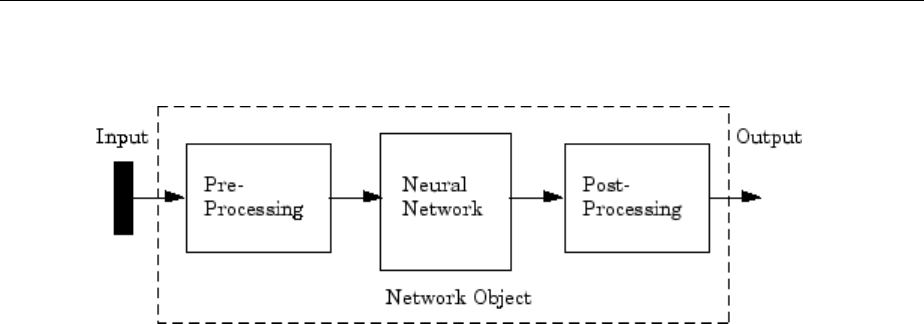

Input and Output Processing Functions

Network inputs might have associated processing functions. Processing functions

transform user input data to a form that is easier or more eicient for a network.

For instance, mapminmax transforms input data so that all values fall into the interval

[−1, 1]. This can speed up learning for many networks. removeconstantrows removes

the rows of the input vector that correspond to input elements that always have the same

value, because these input elements are not providing any useful information to the

network. The third common processing function is fixunknowns, which recodes

unknown data (represented in the user's data with NaN values) into a numerical form for

the network. fixunknowns preserves information about which values are known and

which are unknown.

Similarly, network outputs can also have associated processing functions. Output

processing functions are used to transform user-provided target vectors for network use.

Neural Network Architectures

1-15

Then, network outputs are reverse-processed using the same functions to produce output

data with the same characteristics as the original user-provided targets.

Both mapminmax and removeconstantrows are often associated with network outputs.

However, fixunknowns is not. Unknown values in targets (represented by NaN values) do

not need to be altered for network use.

Processing functions are described in more detail in “Choose Neural Network Input-

Output Processing Functions” on page 4-9.

See Also

More About

• “Neuron Model” on page 1-5

•“Workow for Neural Network Design” on page 1-2

1Neural Network Objects, Data, and Training Styles

1-16

Create Neural Network Object

This topic is part of the design workow described in “Workow for Neural Network

Design” on page 1-2.

The easiest way to create a neural network is to use one of the network creation

functions. To investigate how this is done, you can create a simple, two-layer feedforward

network, using the command feedforwardnet:

net = feedforwardnet

net =

Neural Network

name: 'Feed-Forward Neural Network'

userdata: (your custom info)

dimensions:

numInputs: 1

numLayers: 2

numOutputs: 1

numInputDelays: 0

numLayerDelays: 0

numFeedbackDelays: 0

numWeightElements: 10

sampleTime: 1

connections:

biasConnect: [1; 1]

inputConnect: [1; 0]

layerConnect: [0 0; 1 0]

outputConnect: [0 1]

subobjects:

inputs: {1x1 cell array of 1 input}

layers: {2x1 cell array of 2 layers}

outputs: {1x2 cell array of 1 output}

biases: {2x1 cell array of 2 biases}

inputWeights: {2x1 cell array of 1 weight}

layerWeights: {2x2 cell array of 1 weight}

Create Neural Network Object

1-17

functions:

adaptFcn: 'adaptwb'

adaptParam: (none)

derivFcn: 'defaultderiv'

divideFcn: 'dividerand'

divideParam: .trainRatio, .valRatio, .testRatio

divideMode: 'sample'

initFcn: 'initlay'

performFcn: 'mse'

performParam: .regularization, .normalization

plotFcns: {'plotperform', plottrainstate, ploterrhist,

plotregression}

plotParams: {1x4 cell array of 4 params}

trainFcn: 'trainlm'

trainParam: .showWindow, .showCommandLine, .show, .epochs,

.time, .goal, .min_grad, .max_fail, .mu, .mu_dec,

.mu_inc, .mu_max

weight and bias values:

IW: {2x1 cell} containing 1 input weight matrix

LW: {2x2 cell} containing 1 layer weight matrix

b: {2x1 cell} containing 2 bias vectors

methods:

adapt: Learn while in continuous use

configure: Configure inputs & outputs

gensim: Generate Simulink model

init: Initialize weights & biases

perform: Calculate performance

sim: Evaluate network outputs given inputs

train: Train network with examples

view: View diagram

unconfigure: Unconfigure inputs & outputs

evaluate: outputs = net(inputs)

This display is an overview of the network object, which is used to store all of the

information that denes a neural network. There is a lot of detail here, but there are a

few key sections that can help you to see how the network object is organized.

1Neural Network Objects, Data, and Training Styles

1-18

The dimensions section stores the overall structure of the network. Here you can see that

there is one input to the network (although the one input can be a vector containing many

elements), one network output, and two layers.

The connections section stores the connections between components of the network. For

example, there is a bias connected to each layer, the input is connected to layer 1, and the

output comes from layer 2. You can also see that layer 1 is connected to layer 2. (The

rows of net.layerConnect represent the destination layer, and the columns represent

the source layer. A one in this matrix indicates a connection, and a zero indicates no

connection. For this example, there is a single one in element 2,1 of the matrix.)

The key subobjects of the network object are inputs, layers, outputs, biases,

inputWeights, and layerWeights. View the layers subobject for the rst layer with

the command

net.layers{1}

Neural Network Layer

name: 'Hidden'

dimensions: 10

distanceFcn: (none)

distanceParam: (none)

distances: []

initFcn: 'initnw'

netInputFcn: 'netsum'

netInputParam: (none)

positions: []

range: [10x2 double]

size: 10

topologyFcn: (none)

transferFcn: 'tansig'

transferParam: (none)

userdata: (your custom info)

The number of neurons in a layer is given by its size property. In this case, the layer has

10 neurons, which is the default size for the feedforwardnet command. The net input

function is netsum (summation) and the transfer function is the tansig. If you wanted to

change the transfer function to logsig, for example, you could execute the command:

net.layers{1}.transferFcn = 'logsig';

To view the layerWeights subobject for the weight between layer 1 and layer 2, use the

command:

Create Neural Network Object

1-19

net.layerWeights{2,1}

Neural Network Weight

delays: 0

initFcn: (none)

initConfig: .inputSize

learn: true

learnFcn: 'learngdm'

learnParam: .lr, .mc

size: [0 10]

weightFcn: 'dotprod'

weightParam: (none)

userdata: (your custom info)

The weight function is dotprod, which represents standard matrix multiplication (dot

product). Note that the size of this layer weight is 0-by-10. The reason that we have zero

rows is because the network has not yet been congured for a particular data set. The

number of output neurons is equal to the number of rows in your target vector. During the

conguration process, you will provide the network with example inputs and targets, and

then the number of output neurons can be assigned.

This gives you some idea of how the network object is organized. For many applications,

you will not need to be concerned about making changes directly to the network object,

since that is taken care of by the network creation functions. It is usually only when you

want to override the system defaults that it is necessary to access the network object

directly. Other topics will show how this is done for particular networks and training

methods.

To investigate the network object in more detail, you might nd that the object listings,

such as the one shown above, contain links to help on each subobject. Click the links, and

you can selectively investigate those parts of the object that are of interest to you.

1Neural Network Objects, Data, and Training Styles

1-20

Congure Neural Network Inputs and Outputs

This topic is part of the design workow described in “Workow for Neural Network

Design” on page 1-2.

After a neural network has been created, it must be congured. The conguration step

consists of examining input and target data, setting the network's input and output sizes

to match the data, and choosing settings for processing inputs and outputs that will

enable best network performance. The conguration step is normally done automatically,

when the training function is called. However, it can be done manually, by using the

conguration function. For example, to congure the network you created previously to

approximate a sine function, issue the following commands:

p = -2:.1:2;

t = sin(pi*p/2);

net1 = configure(net,p,t);

You have provided the network with an example set of inputs and targets (desired

network outputs). With this information, the configure function can set the network

input and output sizes to match the data.

After the conguration, if you look again at the weight between layer 1 and layer 2, you

can see that the dimension of the weight is 1 by 20. This is because the target for this

network is a scalar.

net1.layerWeights{2,1}

Neural Network Weight

delays: 0

initFcn: (none)

initConfig: .inputSize

learn: true

learnFcn: 'learngdm'

learnParam: .lr, .mc

size: [1 10]

weightFcn: 'dotprod'

weightParam: (none)

userdata: (your custom info)

In addition to setting the appropriate dimensions for the weights, the conguration step

also denes the settings for the processing of inputs and outputs. The input processing

can be located in the inputs subobject:

Congure Neural Network Inputs and Outputs

1-21

net1.inputs{1}

Neural Network Input

name: 'Input'

feedbackOutput: []

processFcns: {'removeconstantrows', mapminmax}

processParams: {1x2 cell array of 2 params}

processSettings: {1x2 cell array of 2 settings}

processedRange: [1x2 double]

processedSize: 1

range: [1x2 double]

size: 1

userdata: (your custom info)

Before the input is applied to the network, it will be processed by two functions:

removeconstantrows and mapminmax. These are discussed fully in “Multilayer Neural

Networks and Backpropagation Training” on page 4-2 so we won't address the

particulars here. These processing functions may have some processing parameters,

which are contained in the subobject net1.inputs{1}.processParam. These have

default values that you can override. The processing functions can also have conguration

settings that are dependent on the sample data. These are contained in

net1.inputs{1}.processSettings and are set during the conguration process. For

example, the mapminmax processing function normalizes the data so that all inputs fall in

the range [−1, 1]. Its conguration settings include the minimum and maximum values in

the sample data, which it needs to perform the correct normalization. This will be

discussed in much more depth in “Multilayer Neural Networks and Backpropagation

Training” on page 4-2.

As a general rule, we use the term “parameter,” as in process parameters, training

parameters, etc., to denote constants that have default values that are assigned by the

software when the network is created (and which you can override). We use the term

“conguration setting,” as in process conguration setting, to denote constants that are

assigned by the software from an analysis of sample data. These settings do not have

default values, and should not generally be overridden.

For more information, see also “Understanding Neural Network Toolbox Data Structures”

on page 1-23.

1Neural Network Objects, Data, and Training Styles

1-22

Understanding Neural Network Toolbox Data Structures

In this section...

“Simulation with Concurrent Inputs in a Static Network” on page 1-23

“Simulation with Sequential Inputs in a Dynamic Network” on page 1-24

“Simulation with Concurrent Inputs in a Dynamic Network” on page 1-26

This topic discusses how the format of input data structures aects the simulation of

networks. It starts with static networks, and then continues with dynamic networks. The

following section describes how the format of the data structures aects network

training.

There are two basic types of input vectors: those that occur concurrently (at the same

time, or in no particular time sequence), and those that occur sequentially in time. For

concurrent vectors, the order is not important, and if there were a number of networks

running in parallel, you could present one input vector to each of the networks. For

sequential vectors, the order in which the vectors appear is important.

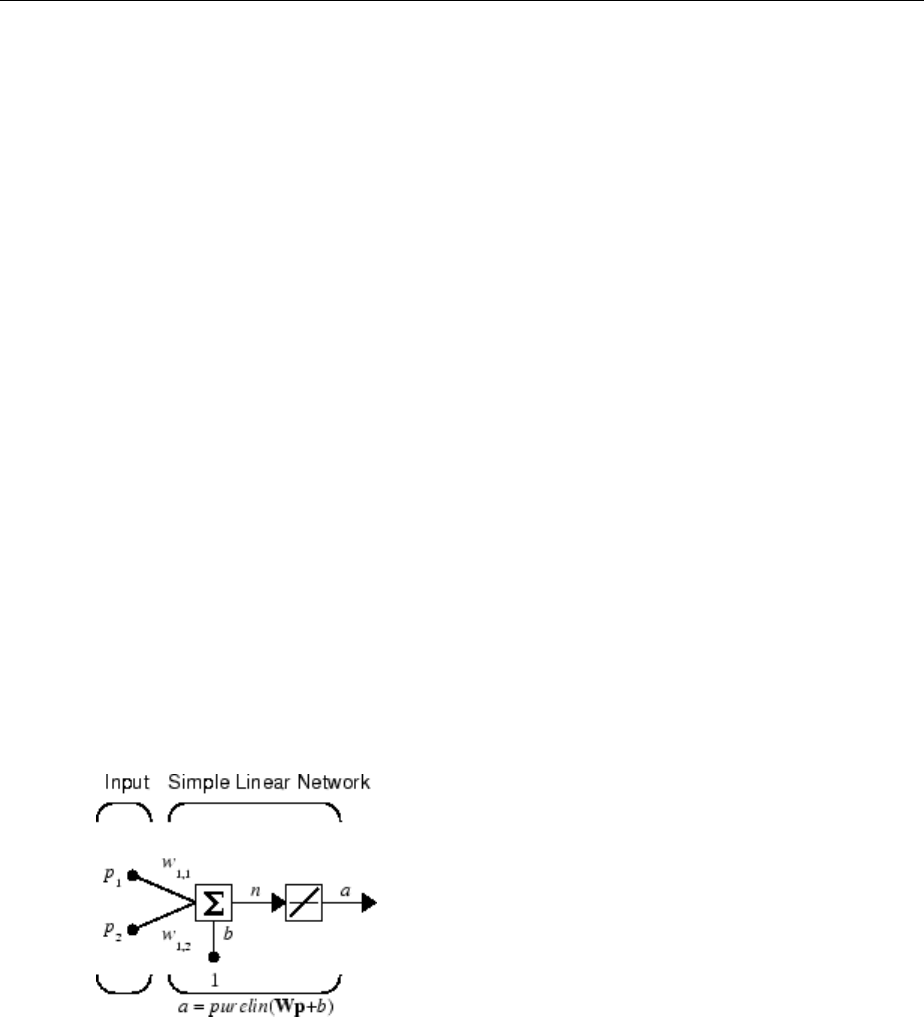

Simulation with Concurrent Inputs in a Static Network

The simplest situation for simulating a network occurs when the network to be simulated

is static (has no feedback or delays). In this case, you need not be concerned about

whether or not the input vectors occur in a particular time sequence, so you can treat the

inputs as concurrent. In addition, the problem is made even simpler by assuming that the

network has only one input vector. Use the following network as an example.

To set up this linear feedforward network, use the following commands:

Understanding Neural Network Toolbox Data Structures

1-23

net = linearlayer;

net.inputs{1}.size = 2;

net.layers{1}.dimensions = 1;

For simplicity, assign the weight matrix and bias to be W = [1 2] and b = [0].

The commands for these assignments are

net.IW{1,1} = [1 2];

net.b{1} = 0;

Suppose that the network simulation data set consists of Q = 4 concurrent vectors:

p p p p

1 2 3 4

1

2

2

1

2

3

3

1

=È

Î

͢

˚

˙=È

Î

͢

˚

˙=È

Î

͢

˚

˙=È

Î

͢

˚

˙

,,,

Concurrent vectors are presented to the network as a single matrix:

P = [1 2 2 3; 2 1 3 1];

You can now simulate the network:

A = net(P)

A =

5 4 8 5

A single matrix of concurrent vectors is presented to the network, and the network

produces a single matrix of concurrent vectors as output. The result would be the same if

there were four networks operating in parallel and each network received one of the

input vectors and produced one of the outputs. The ordering of the input vectors is not

important, because they do not interact with each other.

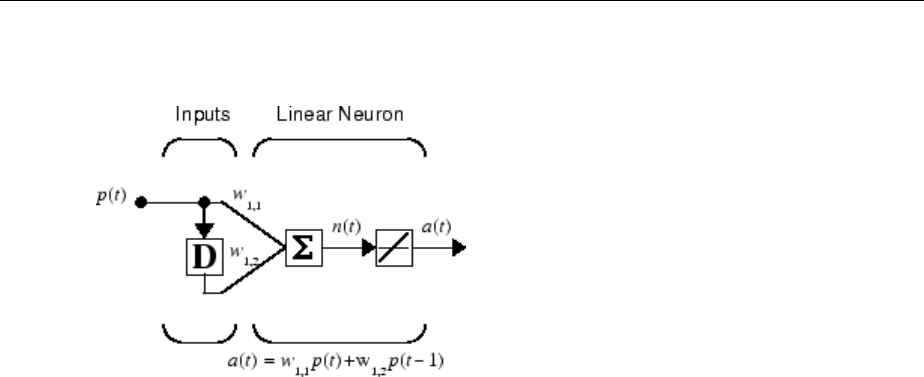

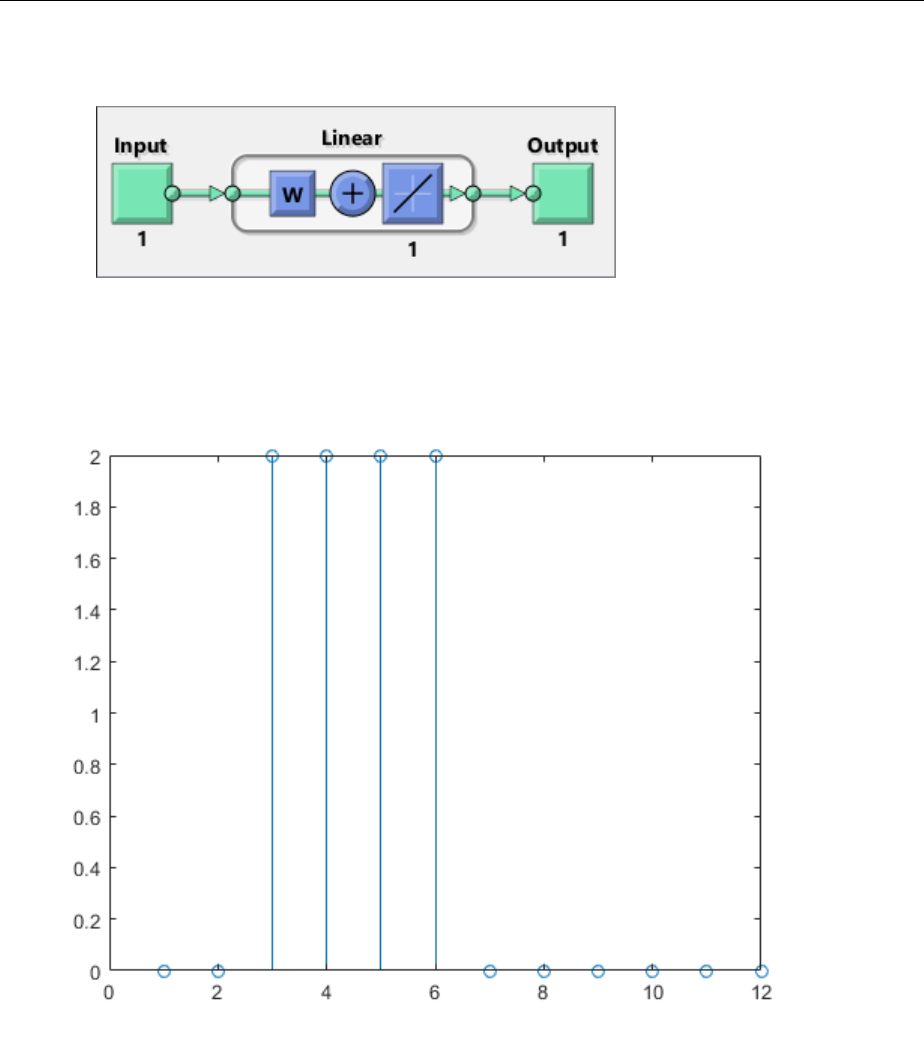

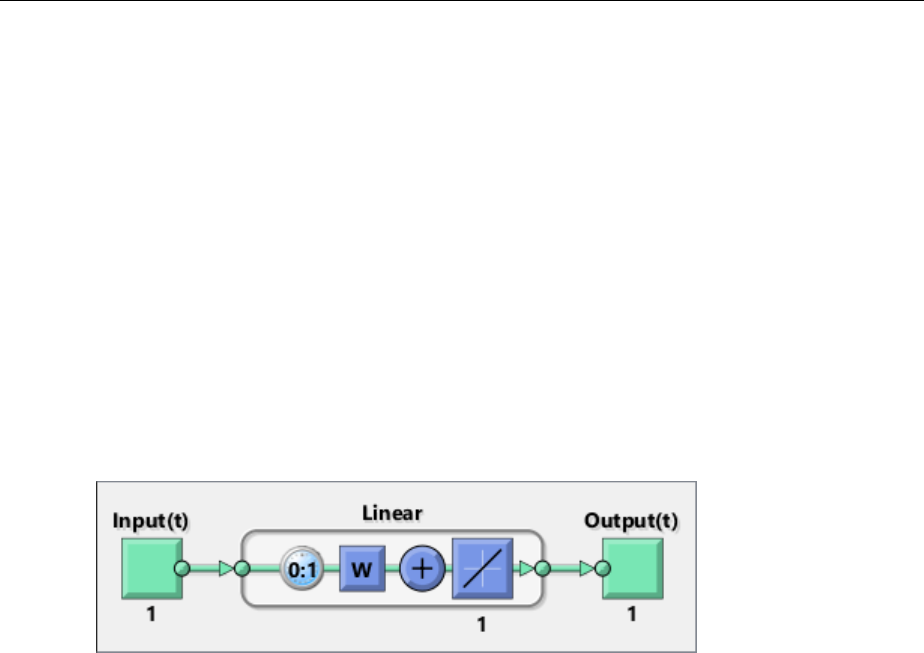

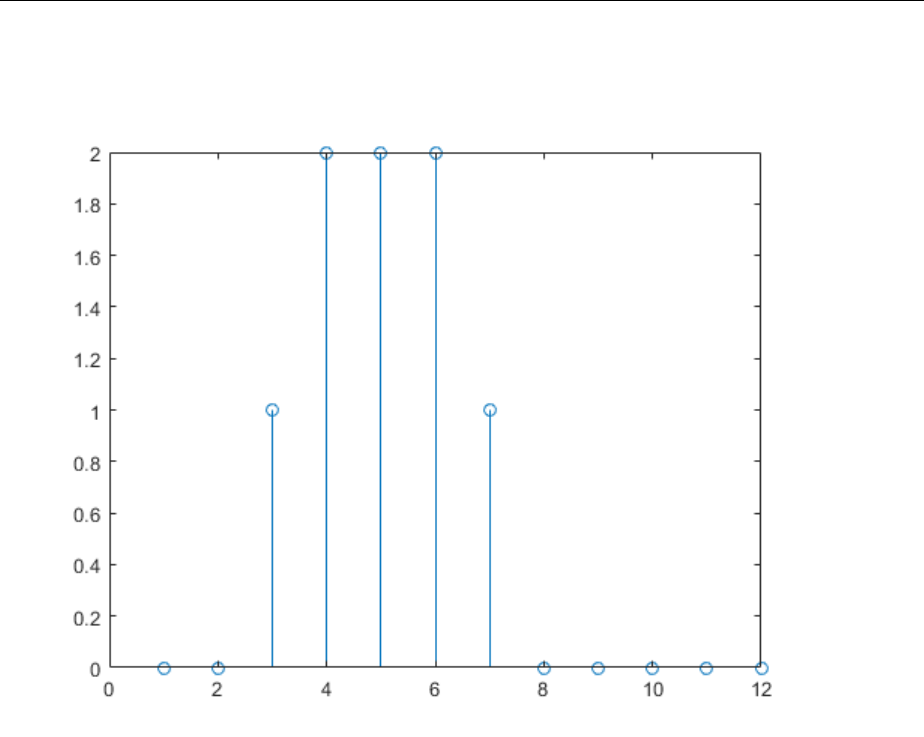

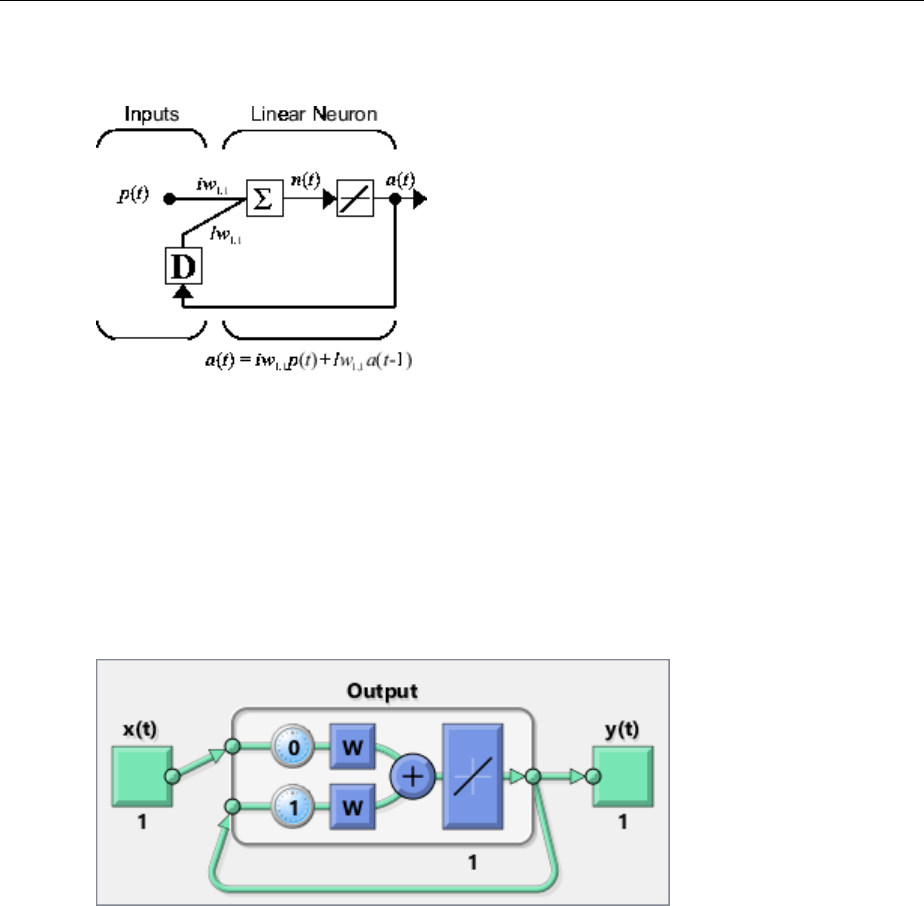

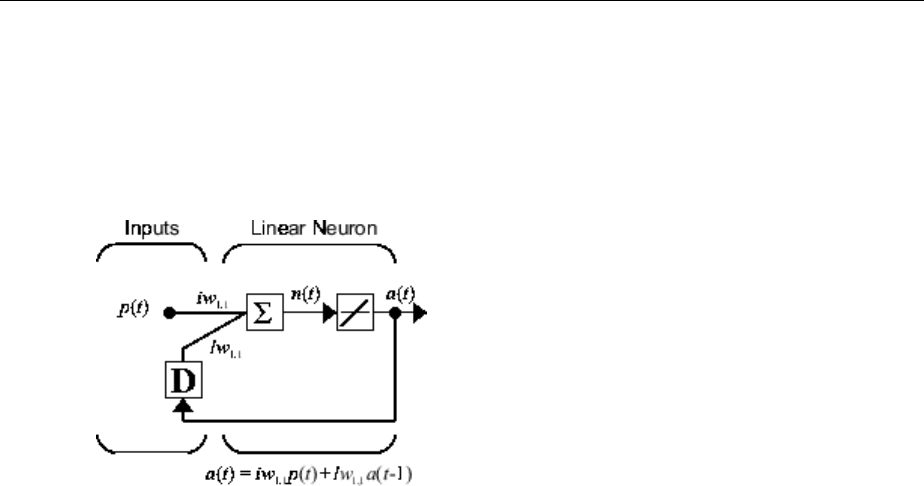

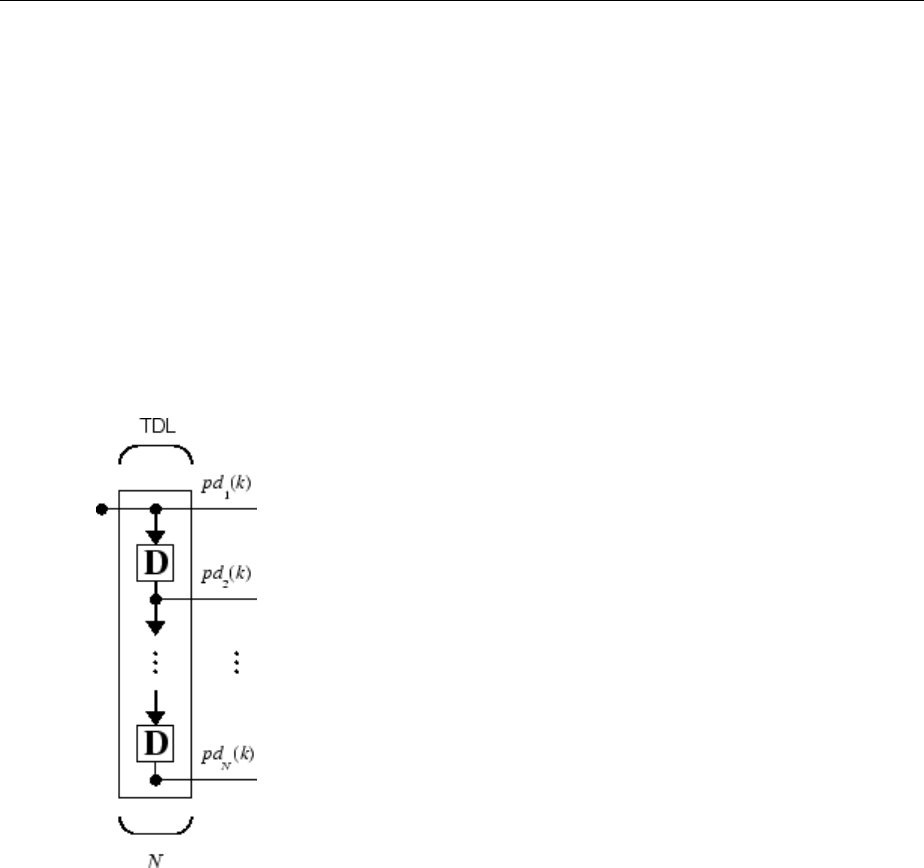

Simulation with Sequential Inputs in a Dynamic Network

When a network contains delays, the input to the network would normally be a sequence

of input vectors that occur in a certain time order. To illustrate this case, the next gure

shows a simple network that contains one delay.

1Neural Network Objects, Data, and Training Styles

1-24

The following commands create this network:

net = linearlayer([0 1]);

net.inputs{1}.size = 1;

net.layers{1}.dimensions = 1;

net.biasConnect = 0;

Assign the weight matrix to be W = [1 2].

The command is:

net.IW{1,1} = [1 2];

Suppose that the input sequence is:

p p p p

1 2 3 4

1 2 3 4=

[ ]

=

[ ]

=

[ ]

=

[ ]

,,,

Sequential inputs are presented to the network as elements of a cell array:

P = {1 2 3 4};

You can now simulate the network:

A = net(P)

A =

[1] [4] [7] [10]

You input a cell array containing a sequence of inputs, and the network produces a cell

array containing a sequence of outputs. The order of the inputs is important when they

are presented as a sequence. In this case, the current output is obtained by multiplying

Understanding Neural Network Toolbox Data Structures

1-25

the current input by 1 and the preceding input by 2 and summing the result. If you were

to change the order of the inputs, the numbers obtained in the output would change.

Simulation with Concurrent Inputs in a Dynamic Network

If you were to apply the same inputs as a set of concurrent inputs instead of a sequence of

inputs, you would obtain a completely dierent response. (However, it is not clear why

you would want to do this with a dynamic network.) It would be as if each input were

applied concurrently to a separate parallel network. For the previous example,

“Simulation with Sequential Inputs in a Dynamic Network” on page 1-24, if you use a

concurrent set of inputs you have

p p p p

1 2 3 4

1 2 3 4=

[ ]

=

[ ]

=

[ ]

=

[ ]

,,,

which can be created with the following code:

P = [1 2 3 4];

When you simulate with concurrent inputs, you obtain

A = net(P)

A =

1 2 3 4

The result is the same as if you had concurrently applied each one of the inputs to a

separate network and computed one output. Note that because you did not assign any

initial conditions to the network delays, they were assumed to be 0. For this case the

output is simply 1 times the input, because the weight that multiplies the current input is

1.

In certain special cases, you might want to simulate the network response to several

dierent sequences at the same time. In this case, you would want to present the network

with a concurrent set of sequences. For example, suppose you wanted to present the

following two sequences to the network:

p p p p

p p

1 1 1 1

2 2

1 1 2 2 3 3 4 4

1 4 2 3

( ) , ( ) , ( ) , ( )

( ) , ( )

=

[ ]

=

[ ]

=

[ ]

=

[ ]

=

[ ]

=

[ ]

,, ( ) , ( )p p

2 2

3 2 4 1=

[ ]

=

[ ]

The input P should be a cell array, where each element of the array contains the two

elements of the two sequences that occur at the same time:

1Neural Network Objects, Data, and Training Styles

1-26

P = {[1 4] [2 3] [3 2] [4 1]};

You can now simulate the network:

A = net(P);

The resulting network output would be

A = {[1 4] [4 11] [7 8] [10 5]}

As you can see, the rst column of each matrix makes up the output sequence produced

by the rst input sequence, which was the one used in an earlier example. The second

column of each matrix makes up the output sequence produced by the second input

sequence. There is no interaction between the two concurrent sequences. It is as if they

were each applied to separate networks running in parallel.

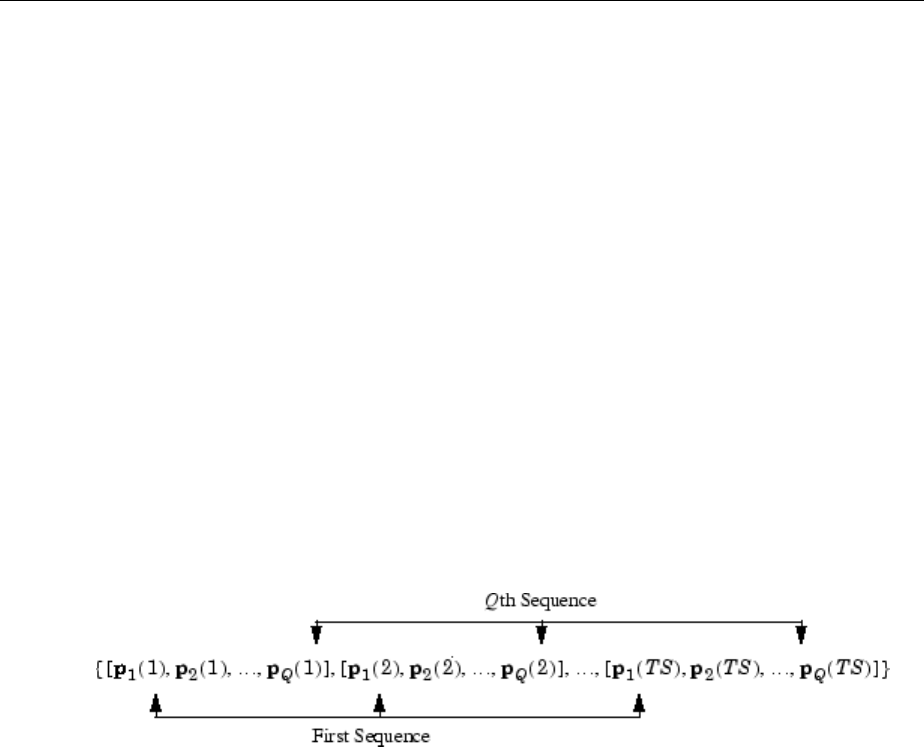

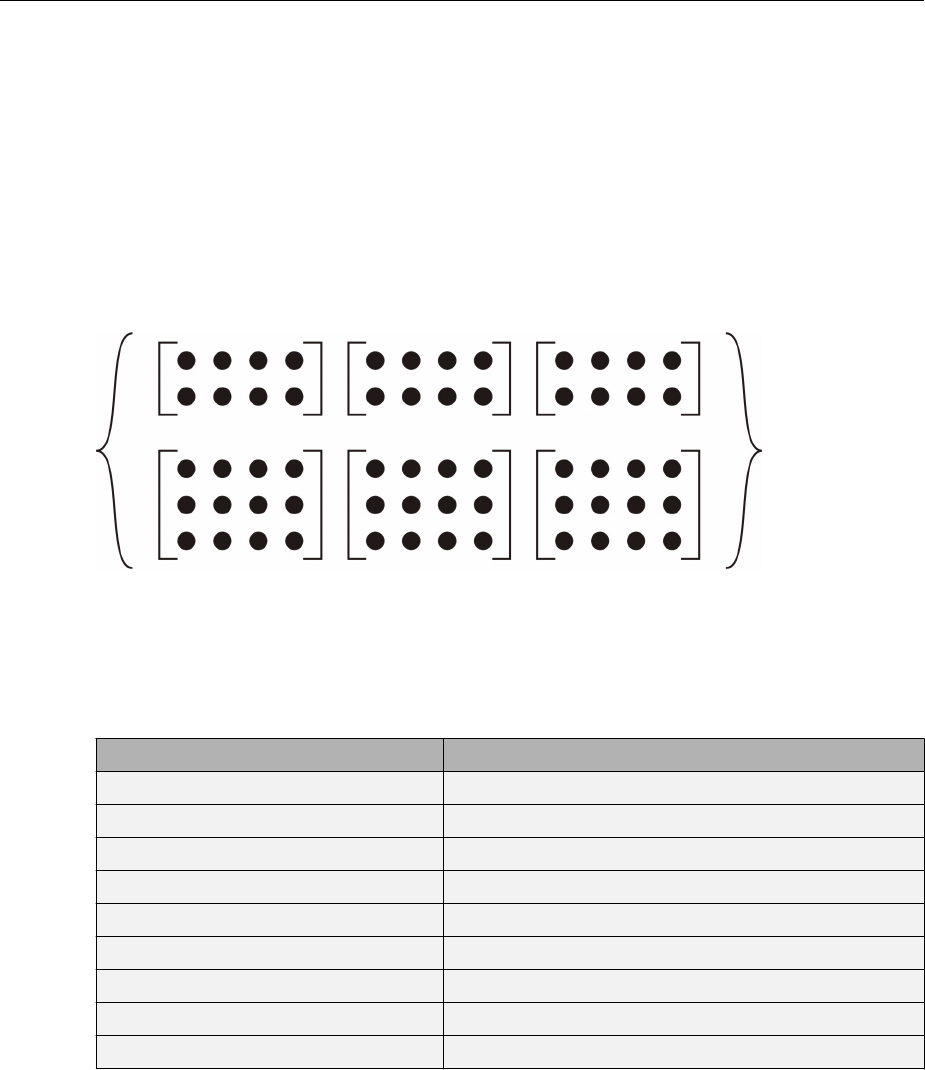

The following diagram shows the general format for the network input P when there are

Q concurrent sequences of TS time steps. It covers all cases where there is a single input

vector. Each element of the cell array is a matrix of concurrent vectors that correspond to

the same point in time for each sequence. If there are multiple input vectors, there will be

multiple rows of matrices in the cell array.

In this topic, you apply sequential and concurrent inputs to dynamic networks. In

“Simulation with Concurrent Inputs in a Static Network” on page 1-23, you applied

concurrent inputs to static networks. It is also possible to apply sequential inputs to static

networks. It does not change the simulated response of the network, but it can aect the

way in which the network is trained. This will become clear in “Neural Network Training

Concepts” on page 1-28.

See also “Congure Neural Network Inputs and Outputs” on page 1-21.

Understanding Neural Network Toolbox Data Structures

1-27

Neural Network Training Concepts

In this section...

“Incremental Training with adapt” on page 1-28

“Batch Training” on page 1-31

“Training Feedback” on page 1-34

This topic is part of the design workow described in “Workow for Neural Network

Design” on page 1-2.

This topic describes two dierent styles of training. In incremental training the weights

and biases of the network are updated each time an input is presented to the network. In

batch training the weights and biases are only updated after all the inputs are presented.

The batch training methods are generally more eicient in the MATLAB environment, and

they are emphasized in the Neural Network Toolbox software, but there some

applications where incremental training can be useful, so that paradigm is implemented

as well.

Incremental Training with adapt

Incremental training can be applied to both static and dynamic networks, although it is

more commonly used with dynamic networks, such as adaptive lters. This section

illustrates how incremental training is performed on both static and dynamic networks.

Incremental Training of Static Networks

Consider again the static network used for the rst example. You want to train it

incrementally, so that the weights and biases are updated after each input is presented. In

this case you use the function adapt, and the inputs and targets are presented as

sequences.

Suppose you want to train the network to create the linear function:

t p p= +21 2

Then for the previous inputs,

p p p p

1 2 3 4

1

2

2

1

2

3

3

1

=È

Î

͢

˚

˙=È

Î

͢

˚

˙=È

Î

͢

˚

˙=È

Î

͢

˚

˙

,,,

1Neural Network Objects, Data, and Training Styles

1-28

the targets would be

t t t t

1 2 3 4

4577=

[ ]

=

[ ]

=

[ ]

=

[ ]

, , ,

For incremental training, you present the inputs and targets as sequences:

P = {[1;2] [2;1] [2;3] [3;1]};

T = {4 5 7 7};

First, set up the network with zero initial weights and biases. Also, set the initial learning

rate to zero to show the eect of incremental training.

net = linearlayer(0,0);

net = configure(net,P,T);

net.IW{1,1} = [0 0];

net.b{1} = 0;

Recall from “Simulation with Concurrent Inputs in a Static Network” on page 1-23 that,

for a static network, the simulation of the network produces the same outputs whether

the inputs are presented as a matrix of concurrent vectors or as a cell array of sequential

vectors. However, this is not true when training the network. When you use the adapt

function, if the inputs are presented as a cell array of sequential vectors, then the weights

are updated as each input is presented (incremental mode). As shown in the next section,

if the inputs are presented as a matrix of concurrent vectors, then the weights are

updated only after all inputs are presented (batch mode).

You are now ready to train the network incrementally.

[net,a,e,pf] = adapt(net,P,T);

The network outputs remain zero, because the learning rate is zero, and the weights are

not updated. The errors are equal to the targets:

a = [0] [0] [0] [0]

e = [4] [5] [7] [7]

If you now set the learning rate to 0.1 you can see how the network is adjusted as each

input is presented:

net.inputWeights{1,1}.learnParam.lr = 0.1;

net.biases{1,1}.learnParam.lr = 0.1;

[net,a,e,pf] = adapt(net,P,T);

a = [0] [2] [6] [5.8]

e = [4] [3] [1] [1.2]

Neural Network Training Concepts

1-29

The rst output is the same as it was with zero learning rate, because no update is made

until the rst input is presented. The second output is dierent, because the weights have

been updated. The weights continue to be modied as each error is computed. If the

network is capable and the learning rate is set correctly, the error is eventually driven to

zero.

Incremental Training with Dynamic Networks

You can also train dynamic networks incrementally. In fact, this would be the most

common situation.

To train the network incrementally, present the inputs and targets as elements of cell

arrays. Here are the initial input Pi and the inputs P and targets T as elements of cell

arrays.

Pi = {1};

P = {2 3 4};

T = {3 5 7};

Take the linear network with one delay at the input, as used in a previous example.

Initialize the weights to zero and set the learning rate to 0.1.

net = linearlayer([0 1],0.1);

net = configure(net,P,T);

net.IW{1,1} = [0 0];

net.biasConnect = 0;

You want to train the network to create the current output by summing the current and

the previous inputs. This is the same input sequence you used in the previous example

with the exception that you assign the rst term in the sequence as the initial condition

for the delay. You can now sequentially train the network using adapt.

[net,a,e,pf] = adapt(net,P,T,Pi);

a = [0] [2.4] [7.98]

e = [3] [2.6] [-0.98]

The rst output is zero, because the weights have not yet been updated. The weights

change at each subsequent time step.

1Neural Network Objects, Data, and Training Styles

1-30

Batch Training

Batch training, in which weights and biases are only updated after all the inputs and

targets are presented, can be applied to both static and dynamic networks. Both types of

networks are discussed in this section.

Batch Training with Static Networks

Batch training can be done using either adapt or train, although train is generally the

best option, because it typically has access to more eicient training algorithms.

Incremental training is usually done with adapt; batch training is usually done with

train.

For batch training of a static network with adapt, the input vectors must be placed in one

matrix of concurrent vectors.

P = [1 2 2 3; 2 1 3 1];

T = [4 5 7 7];

Begin with the static network used in previous examples. The learning rate is set to 0.01.

net = linearlayer(0,0.01);

net = configure(net,P,T);

net.IW{1,1} = [0 0];

net.b{1} = 0;

When you call adapt, it invokes trains (the default adaption function for the linear

network) and learnwh (the default learning function for the weights and biases). trains

uses Widrow-Ho learning.

[net,a,e,pf] = adapt(net,P,T);

a = 0 0 0 0

e = 4 5 7 7

Note that the outputs of the network are all zero, because the weights are not updated

until all the training set has been presented. If you display the weights, you nd

net.IW{1,1}

ans = 0.4900 0.4100

net.b{1}

ans =

0.2300

This is dierent from the result after one pass of adapt with incremental updating.

Neural Network Training Concepts

1-31

Now perform the same batch training using train. Because the Widrow-Ho rule can be

used in incremental or batch mode, it can be invoked by adapt or train. (There are

several algorithms that can only be used in batch mode (e.g., Levenberg-Marquardt), so

these algorithms can only be invoked by train.)

For this case, the input vectors can be in a matrix of concurrent vectors or in a cell array

of sequential vectors. Because the network is static and because train always operates

in batch mode, train converts any cell array of sequential vectors to a matrix of

concurrent vectors. Concurrent mode operation is used whenever possible because it has

a more eicient implementation in MATLAB code:

P = [1 2 2 3; 2 1 3 1];

T = [4 5 7 7];

The network is set up in the same way.

net = linearlayer(0,0.01);

net = configure(net,P,T);

net.IW{1,1} = [0 0];

net.b{1} = 0;

Now you are ready to train the network. Train it for only one epoch, because you used

only one pass of adapt. The default training function for the linear network is trainb,

and the default learning function for the weights and biases is learnwh, so you should

get the same results obtained using adapt in the previous example, where the default

adaption function was trains.

net.trainParam.epochs = 1;

net = train(net,P,T);

If you display the weights after one epoch of training, you nd

net.IW{1,1}

ans = 0.4900 0.4100

net.b{1}

ans =

0.2300

This is the same result as the batch mode training in adapt. With static networks, the

adapt function can implement incremental or batch training, depending on the format of

the input data. If the data is presented as a matrix of concurrent vectors, batch training

occurs. If the data is presented as a sequence, incremental training occurs. This is not

true for train, which always performs batch training, regardless of the format of the

input.

1Neural Network Objects, Data, and Training Styles

1-32

Batch Training with Dynamic Networks

Training static networks is relatively straightforward. If you use train the network is

trained in batch mode and the inputs are converted to concurrent vectors (columns of a

matrix), even if they are originally passed as a sequence (elements of a cell array). If you

use adapt, the format of the input determines the method of training. If the inputs are

passed as a sequence, then the network is trained in incremental mode. If the inputs are

passed as concurrent vectors, then batch mode training is used.

With dynamic networks, batch mode training is typically done with train only, especially

if only one training sequence exists. To illustrate this, consider again the linear network

with a delay. Use a learning rate of 0.02 for the training. (When using a gradient descent

algorithm, you typically use a smaller learning rate for batch mode training than

incremental training, because all the individual gradients are summed before determining

the step change to the weights.)

net = linearlayer([0 1],0.02);

net.inputs{1}.size = 1;

net.layers{1}.dimensions = 1;

net.IW{1,1} = [0 0];

net.biasConnect = 0;

net.trainParam.epochs = 1;

Pi = {1};

P = {2 3 4};

T = {3 5 6};

You want to train the network with the same sequence used for the incremental training

earlier, but this time you want to update the weights only after all the inputs are applied

(batch mode). The network is simulated in sequential mode, because the input is a

sequence, but the weights are updated in batch mode.

net = train(net,P,T,Pi);

The weights after one epoch of training are

net.IW{1,1}

ans = 0.9000 0.6200

These are dierent weights than you would obtain using incremental training, where the

weights would be updated three times during one pass through the training set. For batch

training the weights are only updated once in each epoch.

Neural Network Training Concepts

1-33

Training Feedback

The showWindow parameter allows you to specify whether a training window is visible

when you train. The training window appears by default. Two other parameters,

showCommandLine and show, determine whether command-line output is generated and

the number of epochs between command-line feedback during training. For instance, this

code turns o the training window and gives you training status information every 35

epochs when the network is later trained with train:

net.trainParam.showWindow = false;

net.trainParam.showCommandLine = true;

net.trainParam.show= 35;

Sometimes it is convenient to disable all training displays. To do that, turn o both the

training window and command-line feedback:

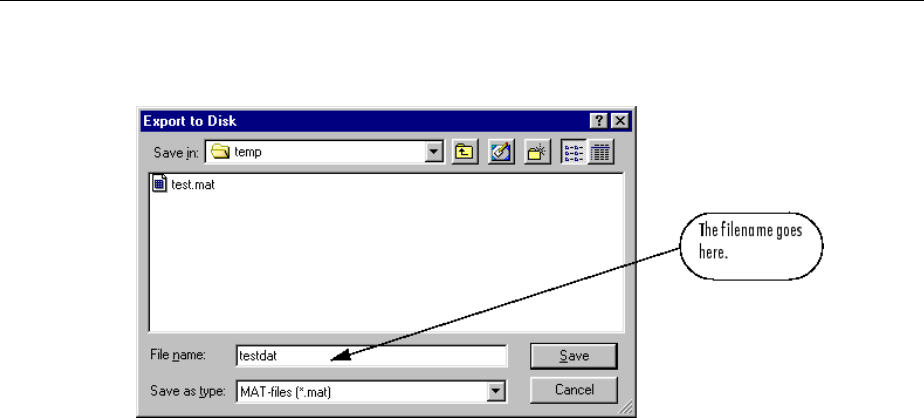

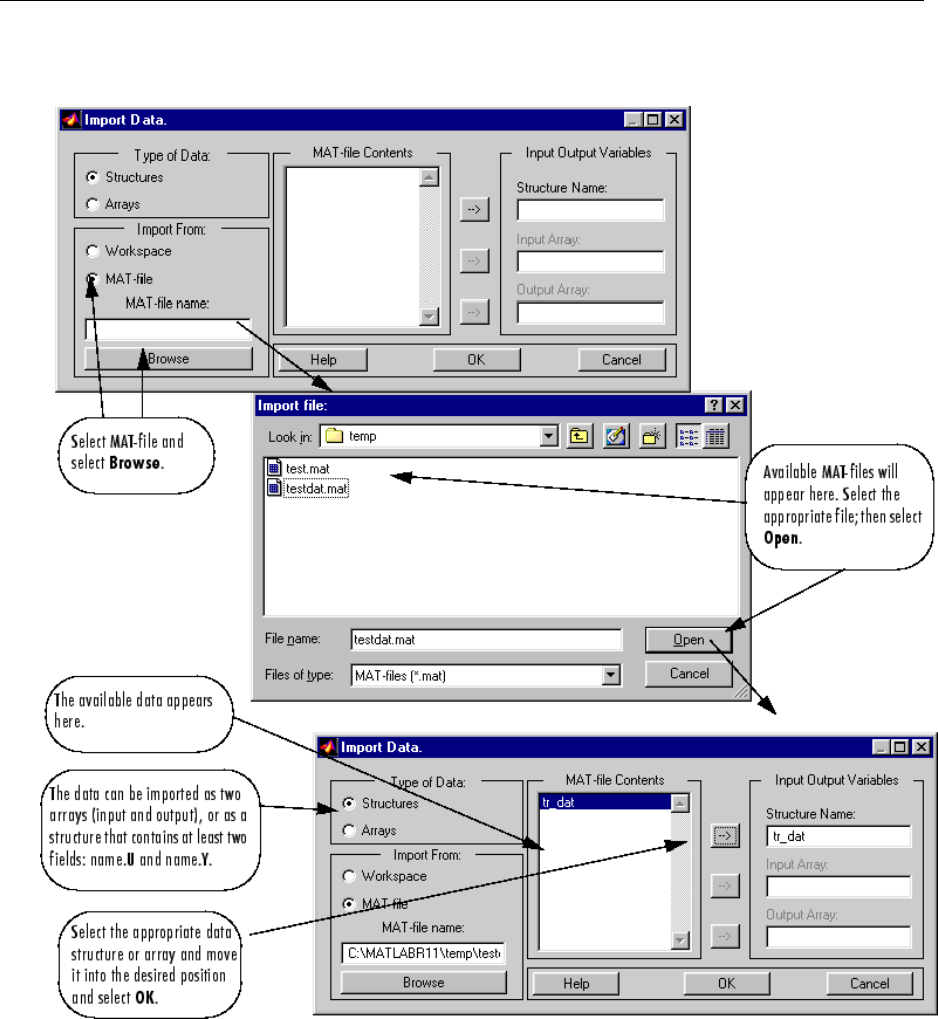

net.trainParam.showWindow = false;