Nimble User Manual

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 192 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- I Introduction

- II Models in NIMBLE

- III Algorithms in NIMBLE

- MCMC

- One-line invocation of MCMC: nimbleMCMC

- The MCMC configuration

- Building and compiling the MCMC

- User-friendly execution of MCMC algorithms: runMCMC

- Running the MCMC

- Extracting MCMC samples

- Calculating WAIC

- k-fold cross-validation

- Samplers provided with NIMBLE

- Detailed MCMC example: litters

- Comparing different MCMCs with MCMCsuite and compareMCMCs

- Sequential Monte Carlo and MCEM

- Spatial models

- Bayesian nonparametric models

- MCMC

- IV Programming with NIMBLE

- Overview

- Writing simple nimbleFunctions

- Creating user-defined BUGS distributions and functions

- Working with NIMBLE models

- Data structures in NIMBLE

- Writing nimbleFunctions to interact with models

- Overview

- Using and compiling nimbleFunctions

- Writing setup code

- Writing run code

- Driving models: calculate, calculateDiff, simulate, getLogProb

- Getting and setting variable and node values

- Getting parameter values and node bounds

- Using modelValues objects

- Using model variables and modelValues in expressions

- Including other methods in a nimbleFunction

- Using other nimbleFunctions

- Virtual nimbleFunctions and nimbleFunctionLists

- Character objects

- User-defined data structures

- Example: writing user-defined samplers to extend NIMBLE's MCMC engine

- Copying nimbleFunctions (and NIMBLE models)

- Debugging nimbleFunctions

- Timing nimbleFunctions with run.time

- Clearing and unloading compiled objects

- Reducing memory usage

2

Contents

I Introduction 9

1 Welcome to NIMBLE 11

1.1 WhatdoesNIMBLEdo?.................................. 11

1.2 Howtousethismanual .................................. 12

2 Lightning introduction 13

2.1 Abriefexample....................................... 13

2.2 Creatingamodel...................................... 13

2.3 Compilingthemodel.................................... 18

2.4 One-line invocation of MCMC . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

2.5 Creating, compiling and running a basic MCMC conguration . . . . . . . . . . . . . 20

2.6 CustomizingtheMCMC.................................. 21

2.7 RunningMCEM ...................................... 23

2.8 Creating your own functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

3 More introduction 29

3.1 NIMBLE adopts and extends the BUGS language for specifying models . . . . . . . 29

3.2 nimbleFunctions for writing algorithms . . . . . . . . . . . . . . . . . . . . . . . . . . 30

3.3 The NIMBLE algorithm library . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

4 Installing NIMBLE 33

4.1 Requirements to run NIMBLE . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 33

4.2 Installing a C++ compiler for NIMBLE to use . . . . . . . . . . . . . . . . . . . . . 33

4.2.1 OSX ........................................ 34

4.2.2 Linux ........................................ 34

4.2.3 Windows ...................................... 34

4.3 Installing the NIMBLE package . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 34

3

4CONTENTS

4.3.1 Problems with installation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 35

4.4 Customizing your installation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 35

4.4.1 Using your own copy of Eigen . . . . . . . . . . . . . . . . . . . . . . . . . . . 35

4.4.2 Usinglibnimble................................... 35

4.4.3 BLASandLAPACK................................ 36

4.4.4 Customizing compilation of the NIMBLE-generated C++ . . . . . . . . . . . 36

II Models in NIMBLE 37

5 Writing models in NIMBLE’s dialect of BUGS 39

5.1 Comparison to BUGS dialects supported by WinBUGS, OpenBUGS and JAGS . . . 39

5.1.1 Supported features of BUGS and JAGS . . . . . . . . . . . . . . . . . . . . . 39

5.1.2 NIMBLE’s Extensions to BUGS and JAGS . . . . . . . . . . . . . . . . . . . 39

5.1.3 Not-yet-supported features of BUGS and JAGS . . . . . . . . . . . . . . . . . 40

5.2 Writingmodels ....................................... 40

5.2.1 Declaring stochastic and deterministic nodes . . . . . . . . . . . . . . . . . . 41

5.2.2 More kinds of BUGS declarations . . . . . . . . . . . . . . . . . . . . . . . . . 43

5.2.3 Vectorized versus scalar declarations . . . . . . . . . . . . . . . . . . . . . . . 45

5.2.4 Available distributions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 46

5.2.5 Available BUGS language functions . . . . . . . . . . . . . . . . . . . . . . . . 50

5.2.6 Available link functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 52

5.2.7 Truncation, censoring, and constraints . . . . . . . . . . . . . . . . . . . . . . 53

6 Building and using models 57

6.1 Creatingmodelobjects................................... 57

6.1.1 Using nimbleModel tocreateamodel....................... 57

6.1.2 Creating a model from standard BUGS and JAGS input les . . . . . . . . . 61

6.1.3 Making multiple instances from the same model denition . . . . . . . . . . . 62

6.2 NIMBLE models are objects you can query and manipulate . . . . . . . . . . . . . . 63

6.2.1 What are variables and nodes? . . . . . . . . . . . . . . . . . . . . . . . . . . 63

6.2.2 Determining the nodes and variables in a model . . . . . . . . . . . . . . . . 63

6.2.3 Accessingnodes................................... 64

6.2.4 Hownodesarenamed ............................... 66

6.2.5 Whyusenodenames?............................... 66

6.2.6 Checking if a node holds data . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

CONTENTS 5

III Algorithms in NIMBLE 69

7 MCMC 71

7.1 One-line invocation of MCMC: nimbleMCMC ...................... 72

7.2 TheMCMCconguration................................. 73

7.2.1 Default MCMC conguration . . . . . . . . . . . . . . . . . . . . . . . . . . . 74

7.2.2 Customizing the MCMC conguration . . . . . . . . . . . . . . . . . . . . . . 75

7.3 Building and compiling the MCMC . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

7.4 User-friendly execution of MCMC algorithms: runMCMC ............... 82

7.5 RunningtheMCMC .................................... 83

7.5.1 Measuring sampler computation times: getTimes ................ 84

7.6 ExtractingMCMCsamples ................................ 84

7.7 CalculatingWAIC ..................................... 85

7.8 k-foldcross-validation ................................... 85

7.9 Samplers provided with NIMBLE . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

7.9.1 Conjugate (‘Gibbs’) samplers . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

7.9.2 Customized log-likelihood evaluations: RW_llFunction sampler ........ 86

7.9.3 Particle MCMC sampler . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 88

7.10 Detailed MCMC example: litters ............................. 88

7.11 Comparing dierent MCMCs with MCMCsuite and compareMCMCs ......... 90

7.11.1 MCMC Suite example: litters ........................... 91

7.11.2 MCMCSuiteoutputs ............................... 91

7.11.3 Customizing MCMC Suite . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 92

8 Sequential Monte Carlo and MCEM 95

8.1 Particle Filters / Sequential Monte Carlo . . . . . . . . . . . . . . . . . . . . . . . . 95

8.1.1 FilteringAlgorithms................................ 95

8.1.2 Particle MCMC (PMCMC) . . . . . . . . . . . . . . . . . . . . . . . . . . . . 98

8.2 Monte Carlo Expectation Maximization (MCEM) . . . . . . . . . . . . . . . . . . . . 99

8.2.1 Estimating the Asymptotic Covariance From MCEM . . . . . . . . . . . . . . 102

9 Spatial models 103

9.1 Intrinsic Gaussian CAR model: dcar_normal ......................103

9.1.1 Specication and density . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 103

9.1.2 Example.......................................105

6CONTENTS

9.2 Proper Gaussian CAR model: dcar_proper .......................106

9.2.1 Specication and density . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 106

9.2.2 Example.......................................107

9.3 MCMC Sampling of CAR models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 109

9.3.1 Initialvalues ....................................109

9.3.2 Zero-neighborregions ...............................109

9.3.3 Zero-meanconstraint................................110

10 Bayesian nonparametric models 111

10.1 Bayesian nonparametric mixture models . . . . . . . . . . . . . . . . . . . . . . . . . 111

10.2 Chinese Restaurant Process model . . . . . . . . . . . . . . . . . . . . . . . . . . . . 112

10.2.1 Specication and density . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 112

10.2.2 Example.......................................113

10.3Stick-breakingmodel....................................114

10.3.1 Specication and function . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 114

10.3.2 Example.......................................115

10.4 MCMC sampling of BNP models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 116

10.4.1 SamplingCRPmodels...............................116

10.4.2 Sampling stick-breaking models . . . . . . . . . . . . . . . . . . . . . . . . . . 117

IV Programming with NIMBLE 119

Overview 121

11 Writing simple nimbleFunctions 123

11.1 Introduction to simple nimbleFunctions . . . . . . . . . . . . . . . . . . . . . . . . . 123

11.2 R functions (or variants) implemented in NIMBLE . . . . . . . . . . . . . . . . . . . 124

11.2.1 Finding help for NIMBLE’s versions of R functions . . . . . . . . . . . . . . . 124

11.2.2 Basicoperations ..................................124

11.2.3 Math and linear algebra . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 126

11.2.4 Distribution functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 128

11.2.5 Flow control: if-then-else,for,while, and stop ..................129

11.2.6 print and cat ....................................130

11.2.7 Checking for user interrupts: checkInterrupt ...................130

11.2.8 Optimization: optim and nimOptim .......................130

CONTENTS 7

11.2.9 ‘nim’ synonyms for some functions . . . . . . . . . . . . . . . . . . . . . . . . 130

11.3 How NIMBLE handles types of variables . . . . . . . . . . . . . . . . . . . . . . . . . 131

11.3.1 nimbleList data structures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 131

11.3.2 How numeric types work . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 131

11.4 Declaring argument and return types . . . . . . . . . . . . . . . . . . . . . . . . . . . 135

11.5 Compiled nimbleFunctions pass arguments by reference . . . . . . . . . . . . . . . . 135

11.6 Calling external compiled code . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 136

11.7 Calling uncompiled R functions from compiled nimbleFunctions . . . . . . . . . . . . 136

12 Creating user-dened BUGS distributions and functions 137

12.1User-denedfunctions ...................................137

12.2 User-dened distributions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 138

12.2.1 Using registerDistributions for alternative parameterizations and providing

otherinformation..................................141

13 Working with NIMBLE models 143

13.1 The variables and nodes in a NIMBLE model . . . . . . . . . . . . . . . . . . . . . . 143

13.1.1 Determining the nodes in a model . . . . . . . . . . . . . . . . . . . . . . . . 143

13.1.2 Understanding lifted nodes . . . . . . . . . . . . . . . . . . . . . . . . . . . . 145

13.1.3 Determining dependencies in a model . . . . . . . . . . . . . . . . . . . . . . 145

13.2 Accessing information about nodes and variables . . . . . . . . . . . . . . . . . . . . 147

13.2.1 Getting distributional information about a node . . . . . . . . . . . . . . . . 147

13.2.2 Getting information about a distribution . . . . . . . . . . . . . . . . . . . . 148

13.2.3 Getting distribution parameter values for a node . . . . . . . . . . . . . . . . 148

13.2.4 Getting distribution bounds for a node . . . . . . . . . . . . . . . . . . . . . . 149

13.3 Carrying out model calculations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 150

13.3.1 Core model operations: calculation and simulation . . . . . . . . . . . . . . . 150

13.3.2 Pre-dened nimbleFunctions for operating on model nodes: simNodes,calc-

Nodes, and getLogProbNodes ............................152

13.3.3 Accessing log probabilities via logProb variables.................154

14 Data structures in NIMBLE 157

14.1 The modelValues data structure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 157

14.1.1 Creating modelValues objects . . . . . . . . . . . . . . . . . . . . . . . . . . . 157

14.1.2 Accessing contents of modelValues . . . . . . . . . . . . . . . . . . . . . . . . 159

14.2 The nimbleList data structure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 163

14.2.1 Using eigen and svd in nimbleFunctions . . . . . . . . . . . . . . . . . . . . . 165

8CONTENTS

15 Writing nimbleFunctions to interact with models 169

15.1Overview ..........................................169

15.2 Using and compiling nimbleFunctions . . . . . . . . . . . . . . . . . . . . . . . . . . 171

15.3Writingsetupcode.....................................172

15.3.1 Useful tools for setup functions . . . . . . . . . . . . . . . . . . . . . . . . . . 172

15.3.2 Accessing and modifying numeric values from setup . . . . . . . . . . . . . . 172

15.3.3 Determining numeric types in nimbleFunctions . . . . . . . . . . . . . . . . . 173

15.3.4 Control of setup outputs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 173

15.4Writingruncode ......................................173

15.4.1 Driving models: calculate,calculateDi,simulate,getLogProb .........174

15.4.2 Getting and setting variable and node values . . . . . . . . . . . . . . . . . . 174

15.4.3 Getting parameter values and node bounds . . . . . . . . . . . . . . . . . . . 176

15.4.4 Using modelValues objects . . . . . . . . . . . . . . . . . . . . . . . . . . . . 177

15.4.5 Using model variables and modelValues in expressions . . . . . . . . . . . . . 180

15.4.6 Including other methods in a nimbleFunction . . . . . . . . . . . . . . . . . . 181

15.4.7 Using other nimbleFunctions . . . . . . . . . . . . . . . . . . . . . . . . . . . 182

15.4.8 Virtual nimbleFunctions and nimbleFunctionLists . . . . . . . . . . . . . . . . 183

15.4.9 Characterobjects..................................185

15.4.10 User-dened data structures . . . . . . . . . . . . . . . . . . . . . . . . . . . . 185

15.5 Example: writing user-dened samplers to extend NIMBLE’s MCMC engine . . . . 187

15.6 Copying nimbleFunctions (and NIMBLE models) . . . . . . . . . . . . . . . . . . . . 188

15.7 Debugging nimbleFunctions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 189

15.8 Timing nimbleFunctions with run.time ..........................189

15.9 Clearing and unloading compiled objects . . . . . . . . . . . . . . . . . . . . . . . . . 190

15.10Reducingmemoryusage..................................190

Part I

Introduction

9

Chapter 1

Welcome to NIMBLE

NIMBLE is a system for building and sharing analysis methods for statistical models from R, espe-

cially for hierarchical models and computationally-intensive methods. While NIMBLE is embedded

in R, it goes beyond R by supporting separate programming of models and algorithms along with

compilation for fast execution.

As of version 0.6.13, NIMBLE has been around for a while and is reasonably stable, but we have

a lot of plans to expand and improve it. The algorithm library provides MCMC with a lot of

user control and ability to write new samplers easily. Other algorithms include particle ltering

(sequential Monte Carlo) and Monte Carlo Expectation Maximization (MCEM).

But NIMBLE is about much more than providing an algorithm library. It provides a language for

writing model-generic algorithms. We hope you will program in NIMBLE and make an R package

providing your method. Of course, NIMBLE is open source, so we also hope you’ll contribute to

its development.

Please join the mailing lists (see R-nimble.org/more/issues-and-groups) and help improve NIMBLE

by telling us what you want to do with it, what you like, and what could be better. We have a

lot of ideas for how to improve it, but we want your help and ideas too. You can also follow and

contribute to developer discussions on the wiki of our GitHub repository.

If you use NIMBLE in your work, please cite us, as this helps justify past and future funding for

the development of NIMBLE. For more information, please call citation('nimble') in R.

1.1 What does NIMBLE do?

NIMBLE makes it easier to program statistical algorithms that will run eciently and work on

many dierent models from R.

You can think of NIMBLE as comprising four pieces:

1. A system for writing statistical models exibly, which is an extension of the BUGS language1.

2. A library of algorithms such as MCMC.

1See Chapter 5for information about NIMBLE’s version of BUGS.

11

12 CHAPTER 1. WELCOME TO NIMBLE

3. A language, called NIMBLE, embedded within and similar in style to R, for writing algorithms

that operate on models written in BUGS.

4. A compiler that generates C++ for your models and algorithms, compiles that C++, and

lets you use it seamlessly from R without knowing anything about C++.

NIMBLE stands for Numerical Inference for statistical Models for Bayesian and Likelihood Esti-

mation.

Although NIMBLE was motivated by algorithms for hierarchical statistical models, it’s useful for

other goals too. You could use it for simpler models. And since NIMBLE can automatically

compile R-like functions into C++ that use the Eigen library for fast linear algebra, you can use it

to program fast numerical functions without any model involved2.

One of the beauties of R is that many of the high-level analysis functions are themselves written in

R, so it is easy to see their code and modify them. The same is true for NIMBLE: the algorithms

are themselves written in the NIMBLE language.

1.2 How to use this manual

We suggest everyone start with the Lightning Introduction in Chapter 2.

Then, if you want to jump into using NIMBLE’s algorithms without learning about NIMBLE’s

programming system, go to Part II to learn how to build your model and Part III to learn how to

apply NIMBLE’s built-in algorithms to your model.

If you want to learn about NIMBLE programming (nimbleFunctions), go to Part IV. This teaches

how to program user-dened function or distributions to use in BUGS code, compile your R code

for faster operations, and write algorithms with NIMBLE. These algorithms could be specic algo-

rithms for your particular model (such as a user-dened MCMC sampler for a parameter in your

model) or general algorithms you can distribute to others. In fact the algorithms provided as part

of NIMBLE and described in Part III are written as nimbleFunctions.

2The packages Rcpp and RcppEigen provide dierent ways of connecting C++, the Eigen library and R. In those

packages you program directly in C++, while in NIMBLE you program in R in a nimbleFunction and the NIMBLE

compiler turns it into C++.

Chapter 2

Lightning introduction

2.1 A brief example

Here we’ll give a simple example of building a model and running some algorithms on the model,

as well as creating our own user-specied algorithm. The goal is to give you a sense for what one

can do in the system. Later sections will provide more detail.

We’ll use the pump model example from BUGS1. We could load the model from the standard BUGS

example le formats (Section 6.1.2), but instead we’ll show how to enter it directly in R.

In this ‘lightning introduction’ we will:

1. Create the model for the pump example.

2. Compile the model.

3. Create a basic MCMC conguration for the pump model.

4. Compile and run the MCMC

5. Customize the MCMC conguration and compile and run that.

6. Create, compile and run a Monte Carlo Expectation Maximization (MCEM) algorithm, which

illustrates some of the exibility NIMBLE provides to combine R and NIMBLE.

7. Write a short nimbleFunction to generate simulations from designated nodes of any model.

2.2 Creating a model

First we dene the model code, its constants, data, and initial values for MCMC.

pumpCode <- nimbleCode({

for (i in 1:N){

theta[i] ~ dgamma(alpha,beta)

lambda[i] <- theta[i]*t[i]

x[i] ~ dpois(lambda[i])

}

alpha ~ dexp(1.0)

1The data set describes failure rates of some pumps.

13

14 CHAPTER 2. LIGHTNING INTRODUCTION

beta ~ dgamma(0.1,1.0)

})

pumpConsts <- list(N = 10,

t = c(94.3,15.7,62.9,126,5.24,

31.4,1.05,1.05,2.1,10.5))

pumpData <- list(x = c(5,1,5,14,3,19,1,1,4,22))

pumpInits <- list(alpha = 1,beta = 1,

theta = rep(0.1, pumpConsts$N))

Here x[i] is the number of failures recorded during a time duration of length t[i] for the ith

pump. theta[i] is a failure rate, and the goal is estimate parameters alpha and beta. Now let’s

create the model and look at some of its nodes.

pump <- nimbleModel(code = pumpCode, name = "pump",constants = pumpConsts,

data = pumpData, inits = pumpInits)

pump$getNodeNames()

## [1] "alpha" "beta" "lifted_d1_over_beta"

## [4] "theta[1]" "theta[2]" "theta[3]"

## [7] "theta[4]" "theta[5]" "theta[6]"

## [10] "theta[7]" "theta[8]" "theta[9]"

## [13] "theta[10]" "lambda[1]" "lambda[2]"

## [16] "lambda[3]" "lambda[4]" "lambda[5]"

## [19] "lambda[6]" "lambda[7]" "lambda[8]"

## [22] "lambda[9]" "lambda[10]" "x[1]"

## [25] "x[2]" "x[3]" "x[4]"

## [28] "x[5]" "x[6]" "x[7]"

## [31] "x[8]" "x[9]" "x[10]"

pump$x

## [1] 5 1 5 14 3 19 1 1 4 22

pump$logProb_x

## [1] -2.998011 -1.118924 -1.882686 -2.319466 -4.254550 -20.739651

## [7] -2.358795 -2.358795 -9.630645 -48.447798

pump$alpha

## [1] 1

2.2. CREATING A MODEL 15

pump$theta

## [1] 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1

pump$lambda

## [1] 9.430 1.570 6.290 12.600 0.524 3.140 0.105 0.105 0.210 1.050

Notice that in the list of nodes, NIMBLE has introduced a new node, lifted_d1_over_beta. We

call this a ‘lifted’ node. Like R, NIMBLE allows alternative parameterizations, such as the scale or

rate parameterization of the gamma distribution. Choice of parameterization can generate a lifted

node, as can using a link function or a distribution argument that is an expression. It’s helpful to

know why they exist, but you shouldn’t need to worry about them.

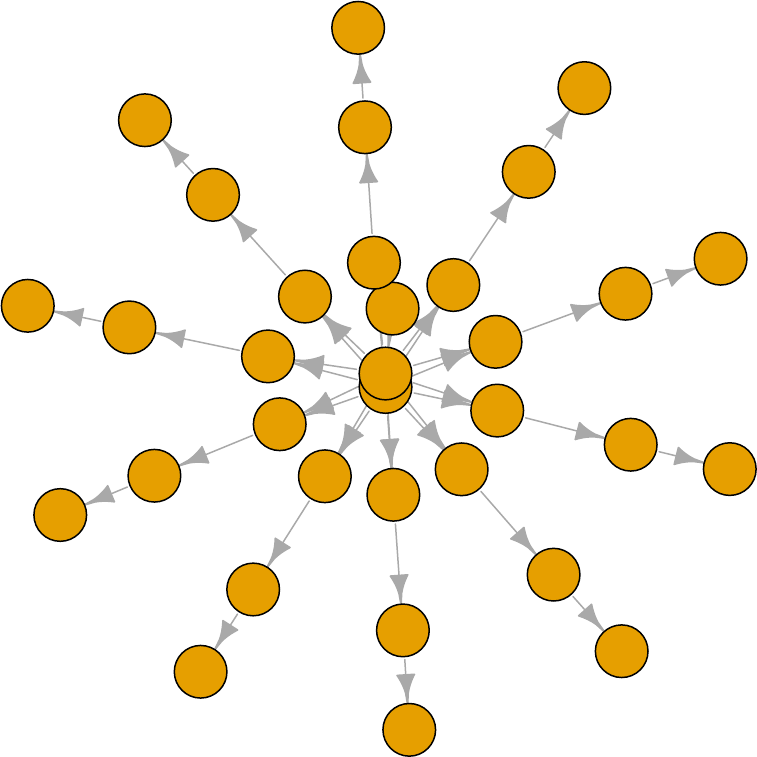

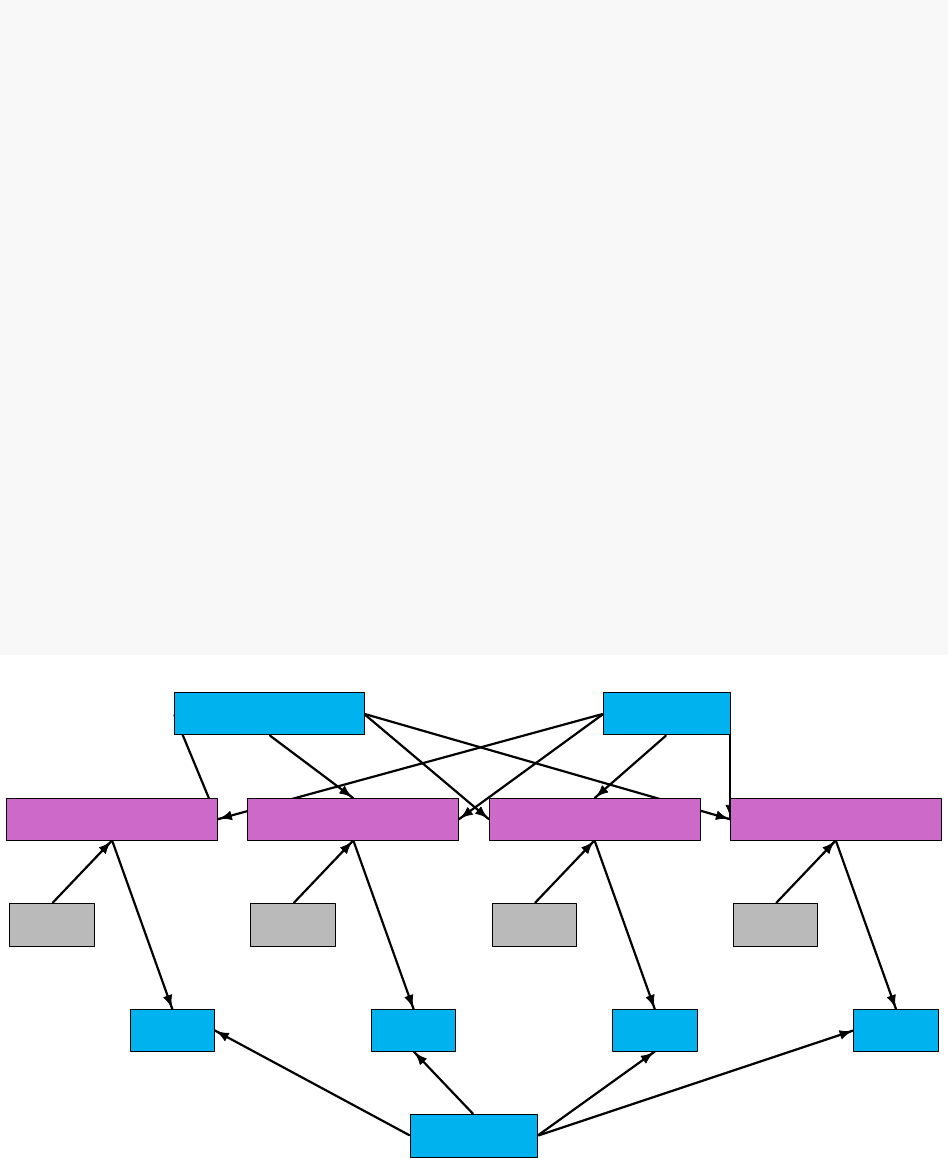

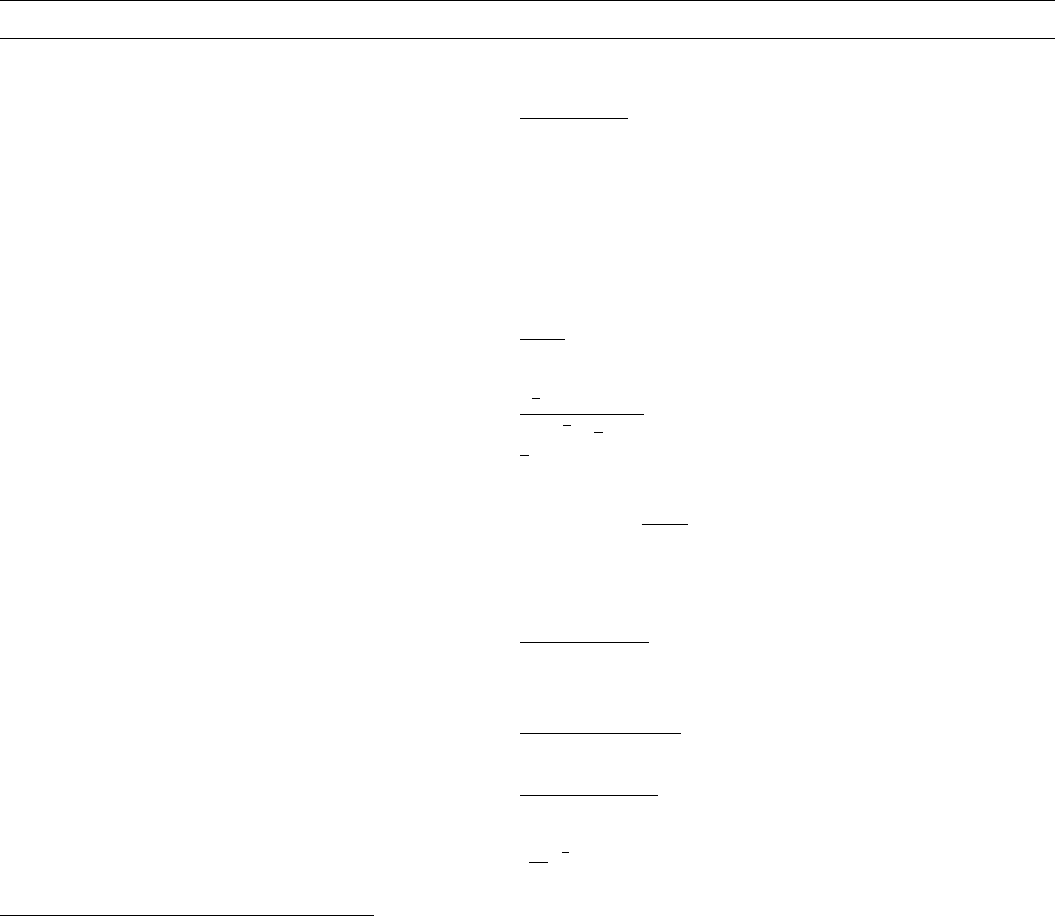

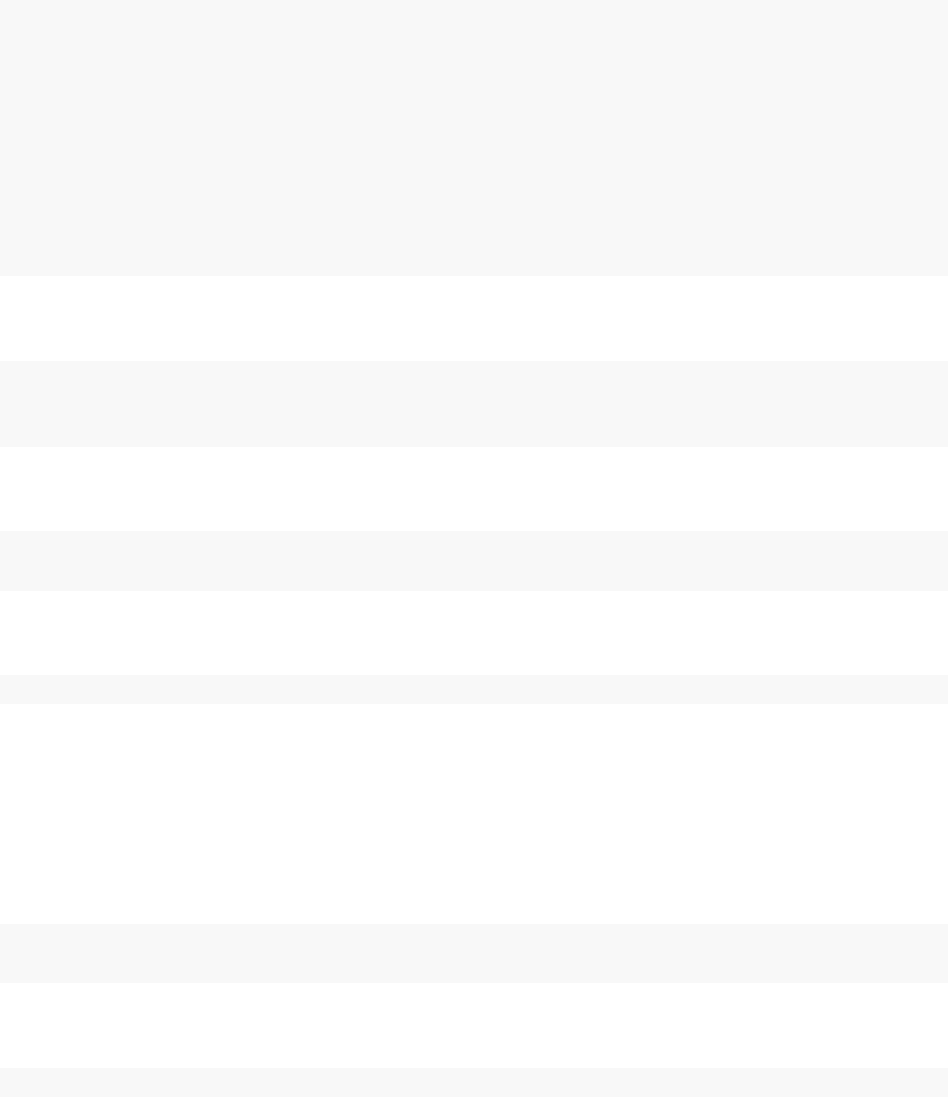

Thanks to the plotting capabilities of the igraph package that NIMBLE uses to represent the

directed acyclic graph, we can plot the model (Figure 2.1).

pump$plotGraph()

You are in control of the model. By default, nimbleModel does its best to initialize a model, but

let’s say you want to re-initialize theta. To simulate from the prior for theta (overwriting the

initial values previously in the model) we rst need to be sure the parent nodes of all theta[i]

nodes are fully initialized, including any non-stochastic nodes such as lifted nodes. We then use

the simulate function to simulate from the distribution for theta. Finally we use the calculate

function to calculate the dependencies of theta, namely lambda and the log probabilities of xto

ensure all parts of the model are up to date. First we show how to use the model’s getDependencies

method to query information about its graph.

# Show all dependencies of alpha and beta terminating in stochastic nodes

pump$getDependencies(c("alpha","beta"))

## [1] "alpha" "beta" "lifted_d1_over_beta"

## [4] "theta[1]" "theta[2]" "theta[3]"

## [7] "theta[4]" "theta[5]" "theta[6]"

## [10] "theta[7]" "theta[8]" "theta[9]"

## [13] "theta[10]"

# Now show only the deterministic dependencies

pump$getDependencies(c("alpha","beta"), determOnly = TRUE)

## [1] "lifted_d1_over_beta"

16 CHAPTER 2. LIGHTNING INTRODUCTION

alpha

beta

lifted_d1_over_beta

theta[1]

theta[2]

theta[3]

theta[4]

theta[5]

theta[6]

theta[7]

theta[8] theta[9]

theta[10]

lambda[1]

lambda[2]

lambda[3]

lambda[4]

lambda[5]

lambda[6]

lambda[7]

lambda[8]

lambda[9]

lambda[10]

x[1]

x[2]

x[3]

x[4]

x[5]

x[6]

x[7]

x[8]

x[9]

x[10]

Figure 2.1: Directed Acyclic Graph plot of the pump model, thanks to the igraph package

2.2. CREATING A MODEL 17

# Check that the lifted node was initialized.

pump[["lifted_d1_over_beta"]] # It was.

## [1] 1

# Now let's simulate new theta values

set.seed(1)# This makes the simulations here reproducible

pump$simulate("theta")

pump$theta # the new theta values

## [1] 0.15514136 1.88240160 1.80451250 0.83617765 1.22254365 1.15835525

## [7] 0.99001994 0.30737332 0.09461909 0.15720154

# lambda and logProb_x haven't been re-calculated yet

pump$lambda # these are the same values as above

## [1] 9.430 1.570 6.290 12.600 0.524 3.140 0.105 0.105 0.210 1.050

pump$logProb_x

## [1] -2.998011 -1.118924 -1.882686 -2.319466 -4.254550 -20.739651

## [7] -2.358795 -2.358795 -9.630645 -48.447798

pump$getLogProb("x")# The sum of logProb_x

## [1] -96.10932

pump$calculate(pump$getDependencies(c("theta")))

## [1] -262.204

pump$lambda # Now they have.

## [1] 14.6298299 29.5537051 113.5038360 105.3583839 6.4061287

## [6] 36.3723548 1.0395209 0.3227420 0.1987001 1.6506161

pump$logProb_x

## [1] -6.002009 -26.167496 -94.632145 -65.346457 -2.626123 -7.429868

## [7] -1.000761 -1.453644 -9.840589 -39.096527

Notice that the rst getDependencies call returned dependencies from alpha and beta down to the

next stochastic nodes in the model. The second call requested only deterministic dependencies. The

call to pump$simulate("theta") expands "theta" to include all nodes in theta. After simulating

into theta, we can see that lambda and the log probabilities of xstill reect the old values of theta,

so we calculate them and then see that they have been updated.

18 CHAPTER 2. LIGHTNING INTRODUCTION

2.3 Compiling the model

Next we compile the model, which means generating C++ code, compiling that code, and loading

it back into R with an object that can be used just like the uncompiled model. The values in

the compiled model will be initialized from those of the original model in R, but the original and

compiled models are distinct objects so any subsequent changes in one will not be reected in the

other.

Cpump <- compileNimble(pump)

Cpump$theta

## [1] 0.15514136 1.88240160 1.80451250 0.83617765 1.22254365 1.15835525

## [7] 0.99001994 0.30737332 0.09461909 0.15720154

Note that the compiled model is used when running any NIMBLE algorithms via C++, so the

model needs to be compiled before (or at the same time as) any compilation of algorithms, such as

the compilation of the MCMC done in the next section.

2.4 One-line invocation of MCMC

The most direct approach to invoking NIMBLE’s MCMC engine is using the nimbleMCMC function.

This function would generally take the code, data, constants, and initial values as input, but it can

also accept the (compiled or uncompiled) model object as an argument. It provides a variety of

options for executing and controlling multiple chains of NIMBLE’s default MCMC algorithm, and

returning posterior samples, posterior summary statistics, and/or WAIC values.

For example, to execute two MCMC chains of 10,000 samples each, and return samples, summary

statistics, and WAIC values:

mcmc.out <- nimbleMCMC(code = pumpCode, constants = pumpConsts,

data = pumpData, inits = pumpInits,

nchains = 2,niter = 10000,

summary = TRUE,WAIC = TRUE,monitors = c('alpha','beta','theta'))

names(mcmc.out)

## [1] "samples" "summary" "WAIC"

mcmc.out$summary

## $chain1

## Mean Median St.Dev. 95%CI_low 95%CI_upp

## alpha 0.69804352 0.65835063 0.27037676 0.287898244 1.3140461

## beta 0.92862598 0.82156847 0.54969128 0.183699137 2.2872696

## theta[1] 0.06019274 0.05676327 0.02544956 0.021069950 0.1199230

## theta[2] 0.10157737 0.08203988 0.07905076 0.008066869 0.3034085

2.4. ONE-LINE INVOCATION OF MCMC 19

## theta[3] 0.08874755 0.08396502 0.03760562 0.031186960 0.1769982

## theta[4] 0.11567784 0.11301465 0.03012598 0.064170937 0.1824525

## theta[5] 0.60382223 0.54935089 0.31219612 0.159731108 1.3640771

## theta[6] 0.61204831 0.60085518 0.13803302 0.372712375 0.9135269

## theta[7] 0.90263434 0.70803389 0.73960182 0.074122175 2.7598261

## theta[8] 0.89021051 0.70774794 0.72668155 0.072571029 2.8189252

## theta[9] 1.57678136 1.44390008 0.76825189 0.455195149 3.4297368

## theta[10] 1.98954127 1.96171250 0.42409802 1.241383787 2.9012192

##

## $chain2

## Mean Median St.Dev. 95%CI_low 95%CI_upp

## alpha 0.69101961 0.65803654 0.26548378 0.277195564 1.2858148

## beta 0.91627273 0.81434426 0.53750825 0.185772263 2.2702428

## theta[1] 0.05937364 0.05611283 0.02461866 0.020956151 0.1161870

## theta[2] 0.10017726 0.08116259 0.07855024 0.008266343 0.3010355

## theta[3] 0.08908126 0.08390782 0.03704170 0.031330829 0.1736876

## theta[4] 0.11592652 0.11356920 0.03064645 0.063595333 0.1829574

## theta[5] 0.59755632 0.54329373 0.31871551 0.149286703 1.3748728

## theta[6] 0.61080189 0.59946693 0.13804343 0.371373877 0.9097319

## theta[7] 0.89902759 0.70901502 0.72930369 0.076243503 2.7441445

## theta[8] 0.89954594 0.70727079 0.73345905 0.071250926 2.8054633

## theta[9] 1.57530029 1.45005738 0.75242164 0.469959364 3.3502795

## theta[10] 1.98911473 1.96227061 0.42298189 1.246910723 2.9102326

##

## $all.chains

## Mean Median St.Dev. 95%CI_low 95%CI_upp

## alpha 0.69453156 0.65803654 0.26795776 0.28329854 1.2999319

## beta 0.92244935 0.81828160 0.54365539 0.18549077 2.2785444

## theta[1] 0.05978319 0.05646474 0.02504028 0.02102807 0.1183433

## theta[2] 0.10087731 0.08162361 0.07880204 0.00811108 0.3017967

## theta[3] 0.08891440 0.08394667 0.03732417 0.03123228 0.1749967

## theta[4] 0.11580218 0.11326039 0.03038683 0.06385253 0.1827382

## theta[5] 0.60068928 0.54668011 0.31548032 0.15363752 1.3686801

## theta[6] 0.61142510 0.60015416 0.13803618 0.37203765 0.9122467

## theta[7] 0.90083096 0.70852800 0.73445465 0.07550465 2.7534885

## theta[8] 0.89487822 0.70761105 0.73007484 0.07211191 2.8067373

## theta[9] 1.57604083 1.44719278 0.76035931 0.46374515 3.3866706

## theta[10] 1.98932800 1.96195345 0.42352979 1.24334249 2.9068229

mcmc.out$WAIC

## [1] 48.69896

See Section 7.1 or help(nimbleMCMC) for more details about using nimbleMCMC.

Note that the WAIC value varies depending on what quantities are treated as parameters; see

Section 7.7 for more details.

20 CHAPTER 2. LIGHTNING INTRODUCTION

2.5 Creating, compiling and running a basic MCMC conguration

At this point we have initial values for all of the nodes in the model, and we have both the original

and compiled versions of the model. As a rst algorithm to try on our model, let’s use NIMBLE’s

default MCMC. Note that conjugate relationships are detected for all nodes except for alpha, on

which the default sampler is a random walk Metropolis sampler.

pumpConf <- configureMCMC(pump, print = TRUE)

## [1] RW sampler: alpha

## [2] conjugate_dgamma_dgamma sampler: beta

## [3] conjugate_dgamma_dpois sampler: theta[1]

## [4] conjugate_dgamma_dpois sampler: theta[2]

## [5] conjugate_dgamma_dpois sampler: theta[3]

## [6] conjugate_dgamma_dpois sampler: theta[4]

## [7] conjugate_dgamma_dpois sampler: theta[5]

## [8] conjugate_dgamma_dpois sampler: theta[6]

## [9] conjugate_dgamma_dpois sampler: theta[7]

## [10] conjugate_dgamma_dpois sampler: theta[8]

## [11] conjugate_dgamma_dpois sampler: theta[9]

## [12] conjugate_dgamma_dpois sampler: theta[10]

pumpConf$addMonitors(c("alpha","beta","theta"))

## thin = 1: alpha, beta, theta

pumpMCMC <- buildMCMC(pumpConf)

CpumpMCMC <- compileNimble(pumpMCMC, project = pump)

niter <- 1000

set.seed(1)

samples <- runMCMC(CpumpMCMC, niter = niter)

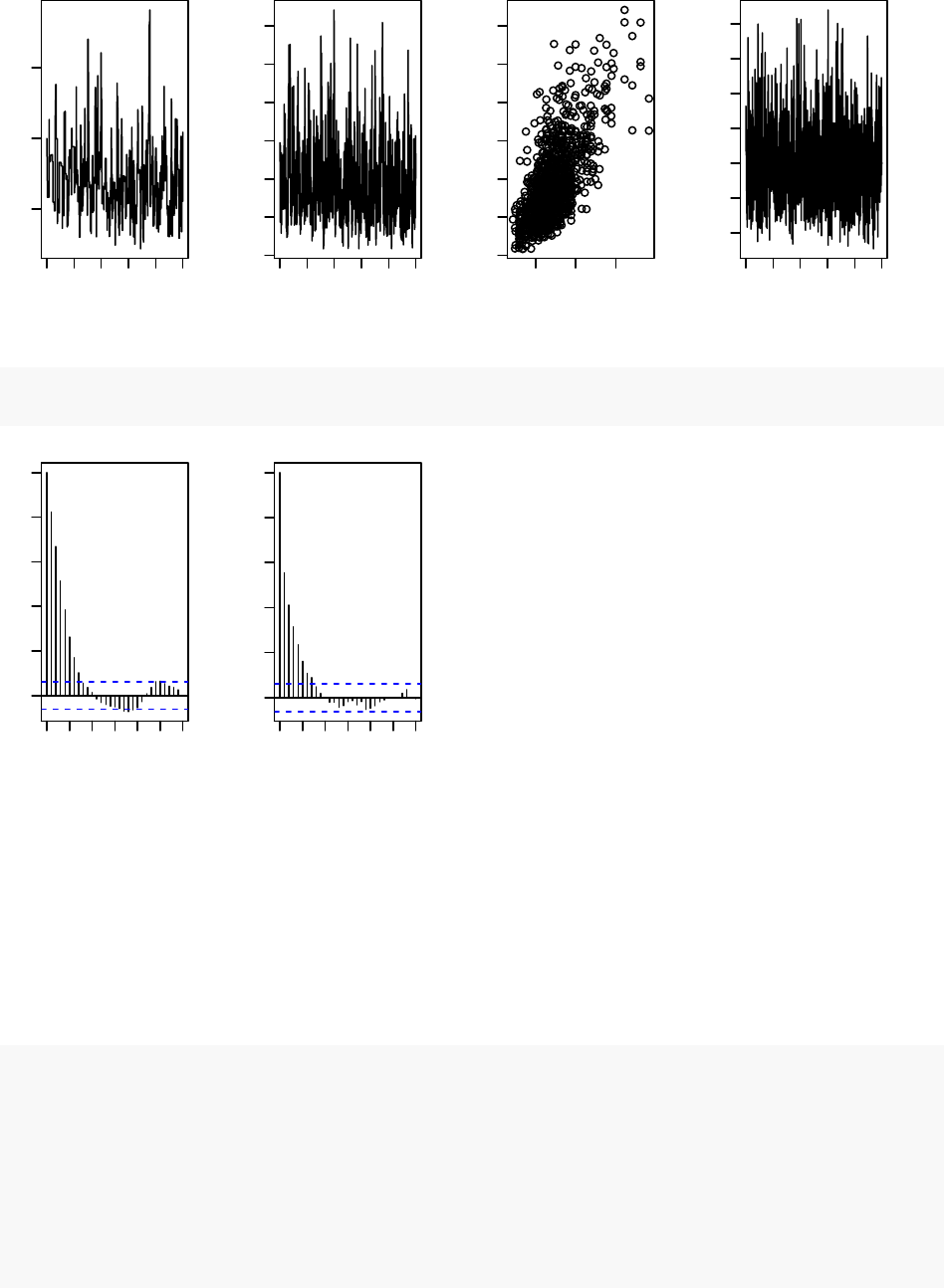

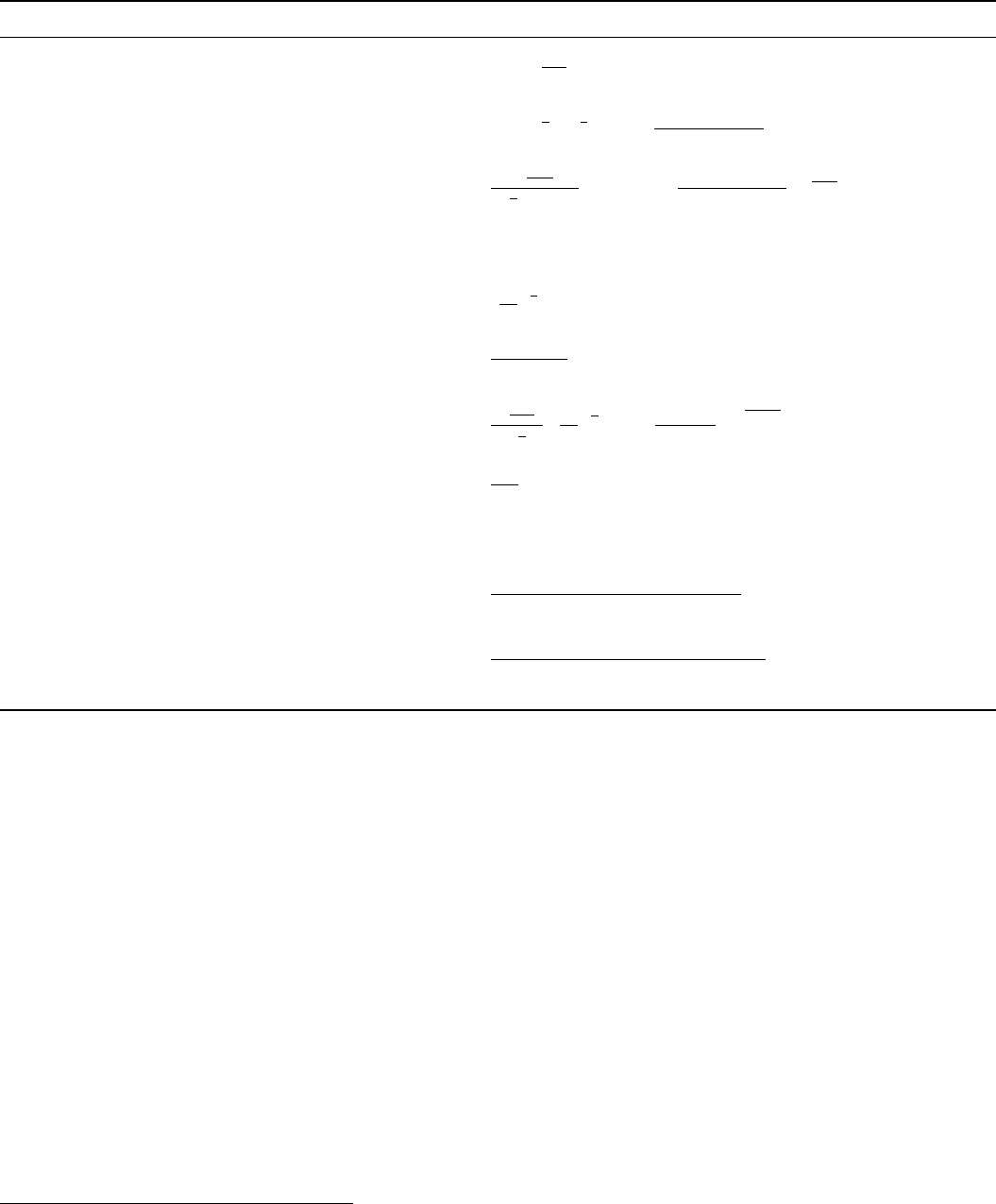

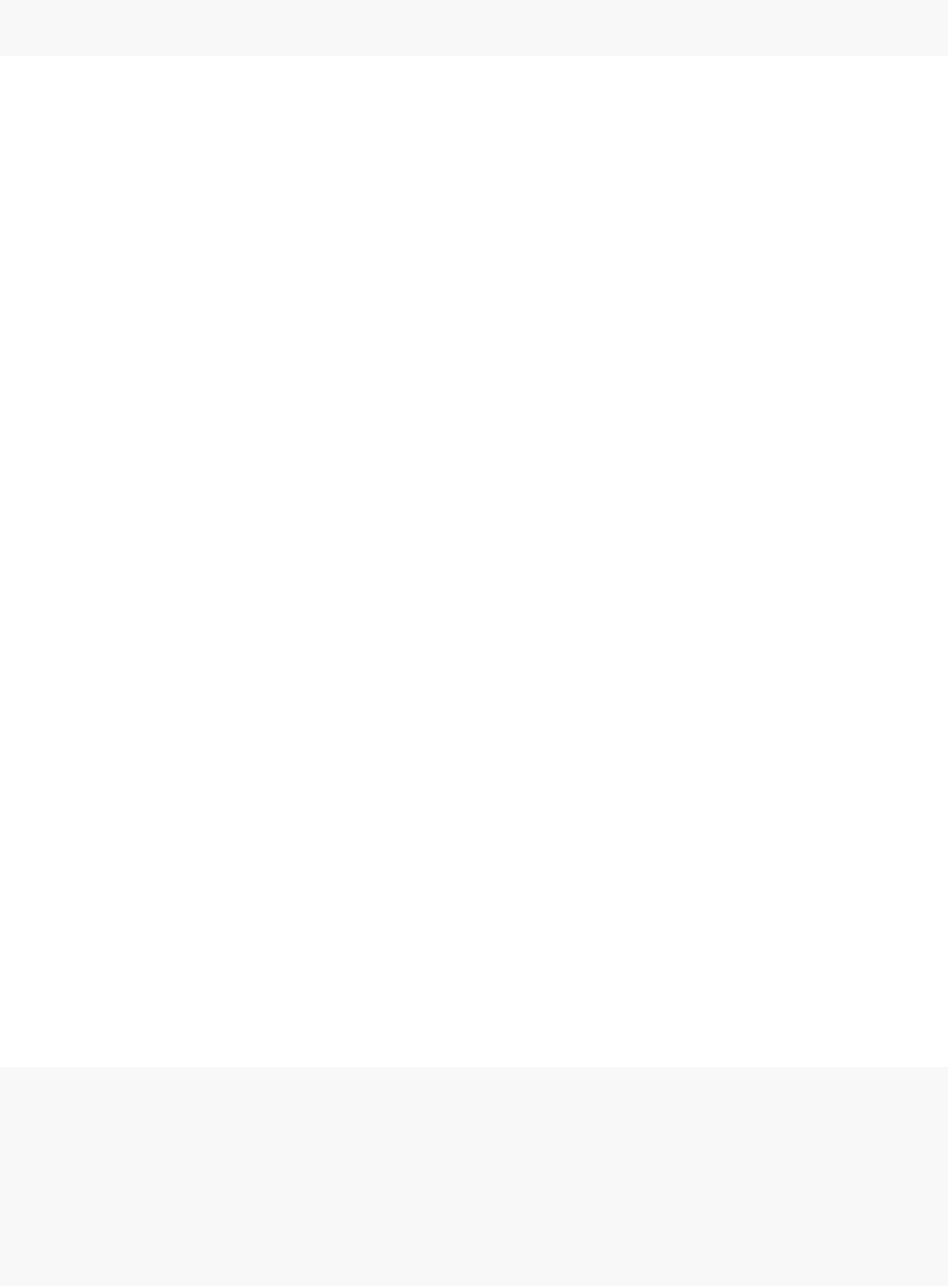

par(mfrow = c(1,4), mai = c(.6, .4, .1, .2))

plot(samples[ , "alpha"], type = "l",xlab = "iteration",

ylab = expression(alpha))

plot(samples[ , "beta"], type = "l",xlab = "iteration",

ylab = expression(beta))

plot(samples[ , "alpha"], samples[ , "beta"], xlab = expression(alpha),

ylab = expression(beta))

plot(samples[ , "theta[1]"], type = "l",xlab = "iteration",

ylab = expression(theta[1]))

2.6. CUSTOMIZING THE MCMC 21

0 400 800

0.5 1.0 1.5

iteration

α

0 400 800

0.0 0.5 1.0 1.5 2.0 2.5 3.0

iteration

β

0.5 1.0 1.5

0.0 0.5 1.0 1.5 2.0 2.5 3.0

α

β

0 400 800

0.02 0.06 0.10 0.14

iteration

θ1

acf(samples[, "alpha"]) # plot autocorrelation of alpha sample

acf(samples[, "beta"]) # plot autocorrelation of beta sample

0 5 15 25

0.0 0.2 0.4 0.6 0.8 1.0

Lag

ACF

0 5 15 25

0.0 0.2 0.4 0.6 0.8 1.0

Lag

ACF

Notice the posterior correlation between alpha and beta. A measure of the mixing for each is the

autocorrelation for each parameter, shown by the acf plots.

2.6 Customizing the MCMC

Let’s add an adaptive block sampler on alpha and beta jointly and see if that improves the mixing.

pumpConf$addSampler(target = c("alpha","beta"), type = "RW_block",

control = list(adaptInterval = 100))

pumpMCMC2 <- buildMCMC(pumpConf)

# need to reset the nimbleFunctions in order to add the new MCMC

CpumpNewMCMC <- compileNimble(pumpMCMC2, project = pump,

resetFunctions = TRUE)

22 CHAPTER 2. LIGHTNING INTRODUCTION

set.seed(1)

CpumpNewMCMC$run(niter)

## NULL

samplesNew <- as.matrix(CpumpNewMCMC$mvSamples)

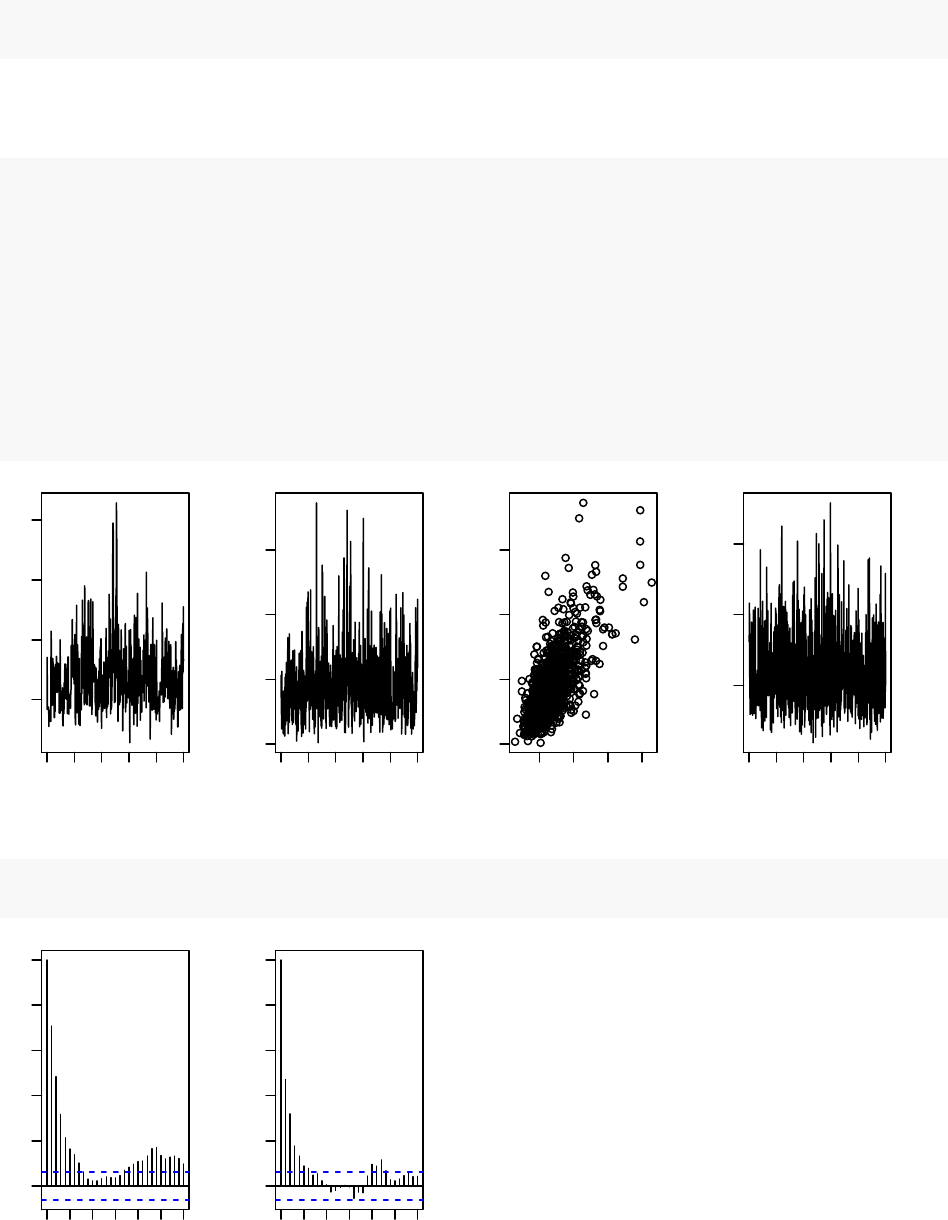

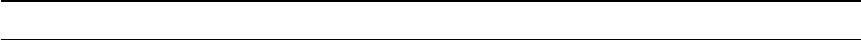

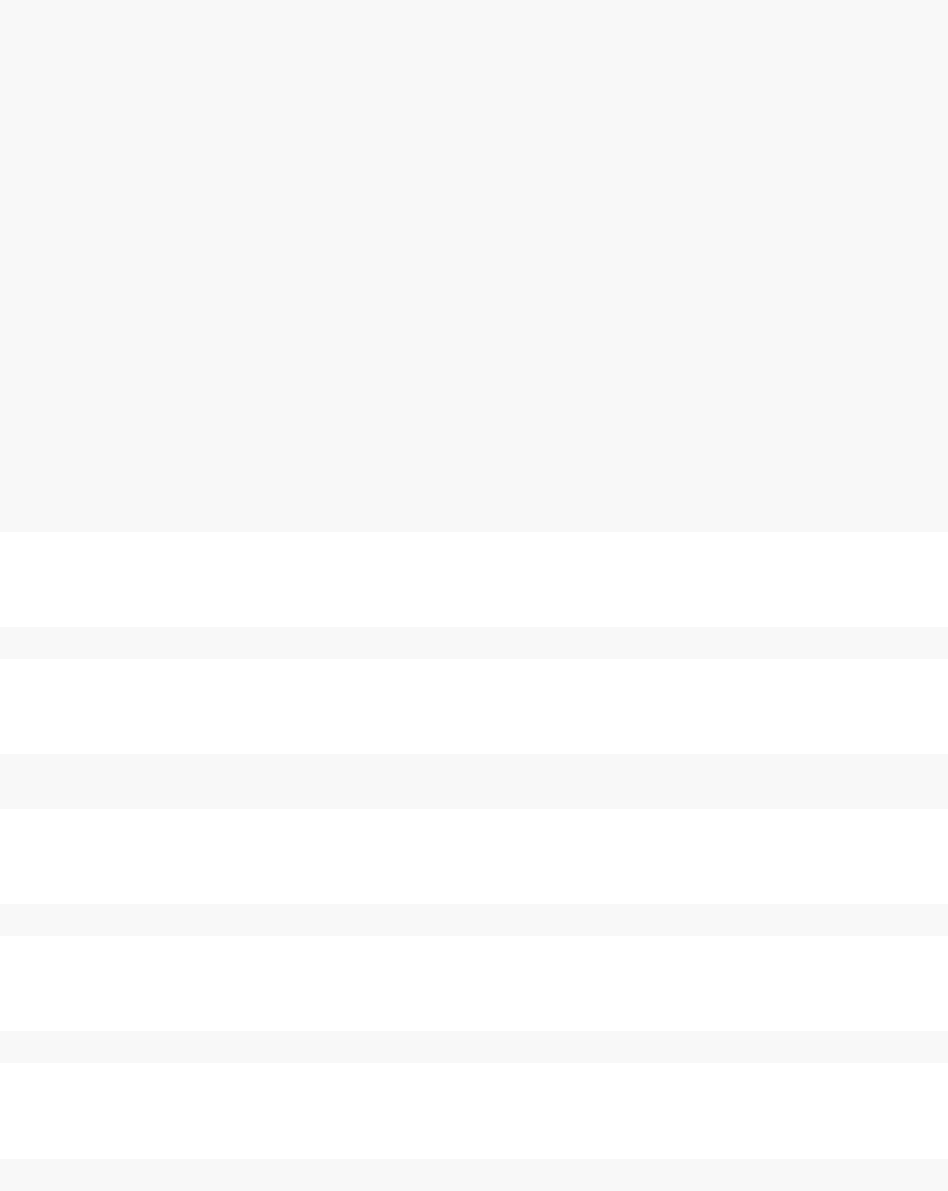

par(mfrow = c(1,4), mai = c(.6, .4, .1, .2))

plot(samplesNew[ , "alpha"], type = "l",xlab = "iteration",

ylab = expression(alpha))

plot(samplesNew[ , "beta"], type = "l",xlab = "iteration",

ylab = expression(beta))

plot(samplesNew[ , "alpha"], samplesNew[ , "beta"], xlab = expression(alpha),

ylab = expression(beta))

plot(samplesNew[ , "theta[1]"], type = "l",xlab = "iteration",

ylab = expression(theta[1]))

0 400 800

0.5 1.0 1.5 2.0

iteration

α

0 400 800

0 1 2 3

iteration

β

0.5 1.5

0 1 2 3

α

β

0 400 800

0.05 0.10 0.15

iteration

θ1

acf(samplesNew[, "alpha"]) # plot autocorrelation of alpha sample

acf(samplesNew[, "beta"]) # plot autocorrelation of beta sample

0 5 15 25

0.0 0.2 0.4 0.6 0.8 1.0

Lag

ACF

0 5 15 25

0.0 0.2 0.4 0.6 0.8 1.0

Lag

ACF

2.7. RUNNING MCEM 23

We can see that the block sampler has decreased the autocorrelation for both alpha and beta. Of

course these are just short runs, and what we are really interested in is the eective sample size of

the MCMC per computation time, but that’s not the point of this example.

Once you learn the MCMC system, you can write your own samplers and include them. The entire

system is written in nimbleFunctions.

2.7 Running MCEM

NIMBLE is a system for working with algorithms, not just an MCMC engine. So let’s try maxi-

mizing the marginal likelihood for alpha and beta using Monte Carlo Expectation Maximization2.

pump2 <- pump$newModel()

box = list(list(c("alpha","beta"), c(0,Inf)))

pumpMCEM <- buildMCEM(model = pump2, latentNodes = "theta[1:10]",

boxConstraints = box)

pumpMLE <- pumpMCEM$run()

## Iteration Number: 1.

## Current number of MCMC iterations: 1000.

## Parameter Estimates:

## alpha beta

## 0.8160625 1.1230921

## Convergence Criterion: 1.001.

## Iteration Number: 2.

## Current number of MCMC iterations: 1000.

## Parameter Estimates:

## alpha beta

## 0.8045037 1.1993128

## Convergence Criterion: 0.0223464.

## Monte Carlo error too big: increasing MCMC sample size.

## Iteration Number: 3.

## Current number of MCMC iterations: 1250.

## Parameter Estimates:

## alpha beta

## 0.8203178 1.2497067

## Convergence Criterion: 0.004913688.

## Monte Carlo error too big: increasing MCMC sample size.

## Monte Carlo error too big: increasing MCMC sample size.

## Monte Carlo error too big: increasing MCMC sample size.

## Iteration Number: 4.

## Current number of MCMC iterations: 3032.

2Note that for this model, one could analytically integrate over theta and then numerically maximize the resulting

marginal likelihood.

24 CHAPTER 2. LIGHTNING INTRODUCTION

## Parameter Estimates:

## alpha beta

## 0.8226618 1.2602452

## Convergence Criterion: 0.0004201048.

pumpMLE

## alpha beta

## 0.8226618 1.2602452

Both estimates are within 0.01 of the values reported by George et al. (1993)3. Some discrepancy

is to be expected since it is a Monte Carlo algorithm.

2.8 Creating your own functions

Now let’s see an example of writing our own algorithm and using it on the model. We’ll do

something simple: simulating multiple values for a designated set of nodes and calculating every

part of the model that depends on them. More details on programming in NIMBLE are in Part

IV.

Here is our nimbleFunction:

simNodesMany <- nimbleFunction(

setup = function(model, nodes) {

mv <- modelValues(model)

deps <- model$getDependencies(nodes)

allNodes <- model$getNodeNames()

},

run = function(n = integer()) {

resize(mv, n)

for(i in 1:n) {

model$simulate(nodes)

model$calculate(deps)

copy(from = model, nodes = allNodes,

to = mv, rowTo = i, logProb = TRUE)

}

})

simNodesTheta1to5 <- simNodesMany(pump, "theta[1:5]")

simNodesTheta6to10 <- simNodesMany(pump, "theta[6:10]")

Here are a few things to notice about the nimbleFunction.

1. The setup function is written in R. It creates relevant information specic to our model for

use in the run-time code.

3Table 2 of the paper accidentally swapped the two estimates.

2.8. CREATING YOUR OWN FUNCTIONS 25

2. The setup code creates a modelValues object to hold multiple sets of values for variables in

the model provided.

3. The run function is written in NIMBLE. It carries out the calculations using the information

determined once for each set of model and nodes arguments by the setup code. The run-time

code is what will be compiled.

4. The run code requires type information about the argument n. In this case it is a scalar

integer.

5. The for-loop looks just like R, but only sequential integer iteration is allowed.

6. The functions calculate and simulate, which were introduced above in R, can be used in

NIMBLE.

7. The special function copy is used here to record values from the model into the modelValues

object.

8. Multiple instances, or ‘specializations’, can be made by calling simNodesMany with dierent ar-

guments. Above, simNodesTheta1to5 has been made by calling simNodesMany with the pump

model and nodes "theta[1:5]" as inputs to the setup function, while simNodesTheta6to10

diers by providing "theta[6:10]" as an argument. The returned objects are objects of a

uniquely generated R reference class with elds (member data) for the results of the setup

code and a run method (member function).

By the way, simNodesMany is very similar to a standard nimbleFunction provided with NIMBLE,

simNodesMV.

Now let’s execute this nimbleFunction in R, before compiling it.

set.seed(1)# make the calculation repeatable

pump$alpha <- pumpMLE[1]

pump$beta <- pumpMLE[2]

# make sure to update deterministic dependencies of the altered nodes

pump$calculate(pump$getDependencies(c("alpha","beta"), determOnly = TRUE))

## [1] 0

saveTheta <- pump$theta

simNodesTheta1to5$run(10)

simNodesTheta1to5$mv[["theta"]][1:2]

## [[1]]

## [1] 0.21829875 1.93210969 0.62296551 0.34197266 3.45729601 1.15835525

## [7] 0.99001994 0.30737332 0.09461909 0.15720154

##

## [[2]]

## [1] 0.82759981 0.08784057 0.34414959 0.29521943 0.14183505 1.15835525

## [7] 0.99001994 0.30737332 0.09461909 0.15720154

26 CHAPTER 2. LIGHTNING INTRODUCTION

simNodesTheta1to5$mv[["logProb_x"]][1:2]

## [[1]]

## [1] -10.250111 -26.921849 -25.630612 -15.594173 -11.217566 -7.429868

## [7] -1.000761 -1.453644 -9.840589 -39.096527

##

## [[2]]

## [1] -61.043876 -1.057668 -11.060164 -11.761432 -3.425282 -7.429868

## [7] -1.000761 -1.453644 -9.840589 -39.096527

In this code we have initialized the values of alpha and beta to their MLE and then recorded

the theta values to use below. Then we have requested 10 simulations from simNodesTheta1to5.

Shown are the rst two simulation results for theta and the log probabilities of x. Notice that

theta[6:10] and the corresponding log probabilities for x[6:10] are unchanged because the nodes

being simulated are only theta[1:5]. In R, this function runs slowly.

Finally, let’s compile the function and run that version.

CsimNodesTheta1to5 <- compileNimble(simNodesTheta1to5,

project = pump, resetFunctions = TRUE)

Cpump$alpha <- pumpMLE[1]

Cpump$beta <- pumpMLE[2]

Cpump$calculate(Cpump$getDependencies(c("alpha","beta"), determOnly = TRUE))

## [1] 0

Cpump$theta <- saveTheta

set.seed(1)

CsimNodesTheta1to5$run(10)

## NULL

CsimNodesTheta1to5$mv[["theta"]][1:2]

## [[1]]

## [1] 0.21829875 1.93210969 0.62296551 0.34197266 3.45729601 1.15835525

## [7] 0.99001994 0.30737332 0.09461909 0.15720154

##

## [[2]]

## [1] 0.82759981 0.08784057 0.34414959 0.29521943 0.14183505 1.15835525

## [7] 0.99001994 0.30737332 0.09461909 0.15720154

2.8. CREATING YOUR OWN FUNCTIONS 27

CsimNodesTheta1to5$mv[["logProb_x"]][1:2]

## [[1]]

## [1] -10.250111 -26.921849 -25.630612 -15.594173 -11.217566 -2.782156

## [7] -1.042151 -1.004362 -1.894675 -3.081102

##

## [[2]]

## [1] -61.043876 -1.057668 -11.060164 -11.761432 -3.425282 -2.782156

## [7] -1.042151 -1.004362 -1.894675 -3.081102

Given the same initial values and the same random number generator seed, we got identical results

for theta[1:5] and their dependencies, but it happened much faster.

28 CHAPTER 2. LIGHTNING INTRODUCTION

Chapter 3

More introduction

Now that we have shown a brief example, we will introduce more about the concepts and design of

NIMBLE.

One of the most important concepts behind NIMBLE is to allow a combination of high-level pro-

cessing in R and low-level processing in C++. For example, when we write a Metropolis-Hastings

MCMC sampler in the NIMBLE language, the inspection of the model structure related to one

node is done in R, and the actual sampler calculations are done in C++. This separation between

setup and run steps will become clearer as we go.

3.1 NIMBLE adopts and extends the BUGS language for specify-

ing models

We adopted the BUGS language, and we have extended it to make it more exible. The BUGS

language became widely used in WinBUGS, then in OpenBUGS and JAGS. These systems all

provide automatically-generated MCMC algorithms, but we have adopted only the language for

describing models, not their systems for generating MCMCs.

NIMBLE extends BUGS by:

1. allowing you to write new functions and distributions and use them in BUGS models;

2. allowing you to dene multiple models in the same code using conditionals evaluated when

the BUGS code is processed;

3. supporting a variety of more exible syntax such as R-like named parameters and more general

algebraic expressions.

By supporting new functions and distributions, NIMBLE makes BUGS an extensible language,

which is a major departure from previous packages that implement BUGS.

We adopted BUGS because it has been so successful, with over 30,000 users by the time they

stopped counting (Lunn et al.,2009). Many papers and books provide BUGS code as a way to

document their statistical models. We describe NIMBLE’s version of BUGS later. The web sites

for WinBUGS, OpenBUGS and JAGS provide other useful documntation on writing models in

BUGS. For the most part, if you have BUGS code, you can try NIMBLE.

NIMBLE does several things with BUGS code:

29

30 CHAPTER 3. MORE INTRODUCTION

1. NIMBLE creates a model denition object that knows everything about the variables and

their relationships written in the BUGS code. Usually you’ll ignore the model denition and

let NIMBLE’s default options take you directly to the next step.

2. NIMBLE creates a model object1. This can be used to manipulate variables and operate

the model from R. Operating the model includes calculating, simulating, or querying the log

probability value of model nodes. These basic capabilities, along with the tools to query

model structure, allow one to write programs that use the model and adapt to its structure.

3. When you’re ready, NIMBLE can generate customized C++ code representing the model,

compile the C++, load it back into R, and provide a new model object that uses the compiled

model internally. We use the word ‘compile’ to refer to all of these steps together.

As an example of how radical a departure NIMBLE is from previous BUGS implementations,

consider a situation where you want to simulate new data from a model written in BUGS code.

Since NIMBLE creates model objects that you can control from R, simulating new data is trivial.

With previous BUGS-based packages, this isn’t possible.

More information about specifying and manipulating models is in Chapters 6and 13.

3.2 nimbleFunctions for writing algorithms

NIMBLE provides nimbleFunctions for writing functions that can (but don’t have to) use BUGS

models. The main ways that nimbleFunctions can use BUGS models are:

1. inspecting the structure of a model, such as determining the dependencies between variables,

in order to do the right calculations with each model;

2. accessing values of the model’s variables;

3. controlling execution of the model’s probability calculations or corresponding simulations;

4. managing modelValues data structures for multiple sets of model values and probabilities.

In fact, the calculations of the model are themselves constructed as nimbleFunctions, as are the

algorithms provided in NIMBLE’s algorithm library2.

Programming with nimbleFunctions involves a fundamental distinction between two stages of pro-

cessing:

1. A setup function within a nimbleFunction gives the steps that need to happen only once for

each new situation (e.g., for each new model). Typically such steps include inspecting the

model’s variables and their relationships, such as determining which parts of a model will

need to be calculated for a MCMC sampler. Setup functions are executed in R and never

compiled.

2. One or more run functions within a nimbleFunction give steps that need to happen multiple

times using the results of the setup function, such as the iterations of a MCMC sampler.

Formally, run code is written in the NIMBLE language, which you can think of as a small

1or multiple model objects

2That’s why it’s easy to use new functions and distributions written as nimbleFunctions in BUGS code.

3.3. THE NIMBLE ALGORITHM LIBRARY 31

subset of R along with features for operating models and related data structures. The NIM-

BLE language is what the NIMBLE compiler can automatically turn into C++ as part of a

compiled nimbleFunction.

What NIMBLE does with a nimbleFunction is similar to what it does with a BUGS model:

1. NIMBLE creates a working R version of the nimbleFunction. This is most useful for debugging

(Section 15.7).

2. When you are ready, NIMBLE can generate C++ code, compile it, load it back into R and

give you new objects that use the compiled C++ internally. Again, we refer to these steps all

together as ‘compilation’. The behavior of compiled nimbleFunctions is usually very similar,

but not identical, to their uncompiled counterparts.

If you are familiar with object-oriented programming, you can think of a nimbleFunction as a class

denition. The setup function initializes a new object and run functions are class methods. Member

data are determined automatically as the objects from a setup function needed in run functions. If

no setup function is provided, the nimbleFunction corresponds to a simple (compilable) function

rather than a class.

More about writing algorithms is in Chapter 15.

3.3 The NIMBLE algorithm library

In Version 0.6.13, the NIMBLE algorithm library includes:

1. MCMC with samplers including conjugate (Gibbs), slice, adaptive random walk (with options

for reection or sampling on a log scale), adaptive block random walk, and elliptical slice,

among others. You can modify sampler choices and congurations from R before compiling

the MCMC. You can also write new samplers as nimbleFunctions.

2. WAIC calculation for model comparison after an MCMC algorithm has been run.

3. A set of particle lter (sequential Monte Carlo) methods including a basic bootstrap lter,

auxiliary particle lter, and Liu-West lter.

4. An ascent-based Monte Carlo Expectation Maximization (MCEM) algorithm.

5. A variety of basic functions that can be used as programming tools for larger algorithms.

These include:

a. A likelihood function for arbitrary parts of any model.

b. Functions to simulate one or many sets of values for arbitrary parts of any model.

c. Functions to calculate the summed log probability (density) for one or many sets of

values for arbitrary parts of any model along with stochastic dependencies in the model

structure.

More about the NIMBLE algorithm library is in Chapter 8.

32 CHAPTER 3. MORE INTRODUCTION

Chapter 4

Installing NIMBLE

4.1 Requirements to run NIMBLE

You can run NIMBLE on any of the three common operating systems: Linux, Mac OS X, or

Windows.

The following are required to run NIMBLE.

1. R, of course.

2. The igraph and coda R packages.

3. A working C++ compiler that NIMBLE can use from R on your system. There are standard

open-source C++ compilers that the R community has already made easy to install. See

Section 4.2 for instructions. You don’t need to know anything about C++ to use NIMBLE.

This must be done before installing NIMBLE.

NIMBLE also uses a couple of C++ libraries that you don’t need to install, as they will already be

on your system or are provided by NIMBLE.

1. The Eigen C++ library for linear algebra. This comes with NIMBLE, or you can use your

own copy.

2. The BLAS and LAPACK numerical libraries. These come with R, but see Section 4.4.3 for

how to use a faster version of the BLAS.

Most fairly recent versions of these requirements should work.

4.2 Installing a C++ compiler for NIMBLE to use

NIMBLE needs a C++ compiler and the standard utility make in order to generate and compile

C++ for models and algorithms.1

1This diers from most packages, which might need a C++ compiler only when the package is built. If you

normally install R packages using install.packages on Windows or OS X, the package arrives already built to your

system.

33

34 CHAPTER 4. INSTALLING NIMBLE

4.2.1 OS X

On OS X, you should install Xcode. The command-line tools, which are available as a smaller

installation, should be sucient. This is freely available from the Apple developer site and the App

Store.

For the compiler to work correctly for OS X, the installed R must be for the correct version of OS

X. For example, R for Snow Leopard (OS X version 10.8) will attempt to use an incorrect C++

compiler if the installed OS X is actually version 10.9 or higher.

In the somewhat unlikely event you want to install from the source package rather than the CRAN

binary package, the easiest approach is to use the source package provided at R-nimble.org. If you

do want to install from the source package provided by CRAN, you’ll need to install this gfortran

package.

4.2.2 Linux

On Linux, you can install the GNU compiler suite (gcc/g++). You can use the package manager

to install pre-built binaries. On Ubuntu, the following command will install or update make,gcc

and libc.

sudo apt-get install build-essential

4.2.3 Windows

On Windows, you should download and install Rtools.exe available from https://cran.r-project.

org/bin/windows/Rtools/. Select the appropriate executable corresponding to your version of R

(and follow the urge to update your version of R if you notice it is not the most recent). This installer

leads you through several ‘pages’. We think you can accept the defaults with one exception: check

the PATH checkbox (page 5) so that the installer will add the location of the C++ compiler and

related tools to your system’s PATH, ensuring that R can nd them. After you click ‘Next’, you

will get a page with a window for customizing the new PATH variable. You shouldn’t need to do

anything there, so you can simply click ‘Next’ again.

The checkbox for the ‘R 2.15+ toolchain’ (page 4) must be checked (in order to have gcc/g++,

make, etc. installed). This should be checked by default.

4.3 Installing the NIMBLE package

Since NIMBLE is an R package, you can install it in the usual way, via install.packages("nimble")

in R or using the R CMD INSTALL method if you download the package source directly.

NIMBLE can also be obtained from the NIMBLE website. To install from our website, please see

our Download page for the specic invocation of install.packages.

4.4. CUSTOMIZING YOUR INSTALLATION 35

4.3.1 Problems with installation

We have tested the installation on the three commonly used platforms – MacOS, Linux, Windows2.

We don’t anticipate problems with installation, but we want to hear about any and help resolve

them. Please post about installation problems to the nimble-users Google group or email nimble.

stats@gmail.com.

4.4 Customizing your installation

For most installations, you can ignore low-level details. However, there are some options that some

users may want to utilize.

4.4.1 Using your own copy of Eigen

NIMBLE uses the Eigen C++ template library for linear algebra. Version 3.2.1 of Eigen is included

in the NIMBLE package and that version will be used unless the package’s conguration script nds

another version on the machine. This works well, and the following is only relevant if you want to

use a dierent (e.g., newer) version.

The conguration script looks in the standard include directories, e.g. /usr/include and

/usr/local/include for the header le Eigen/Dense. You can specify a particular location in

either of two ways:

1. Set the environment variable EIGEN_DIR before installing the R package, for example: export

EIGEN_DIR=/usr/include/eigen3 in the bash shell.

2. Use R CMD INSTALL --configure-args='--with-eigen=/path/to/eigen' nimble_VERSION.tar.gz

or install.packages("nimble", configure.args = "--with-eigen=/path/to/eigen")

In these cases, the directory should be the full path to the directory that contains the Eigen

directory, e.g., /usr/include/eigen3. It is not the full path to the Eigen directory itself, i.e.,

NOT /usr/include/eigen3/Eigen.

4.4.2 Using libnimble

NIMBLE generates specialized C++ code for user-specied models and nimbleFunctions. This

code uses some NIMBLE C++ library classes and functions. By default, on Linux the library code

is compiled once as a linkable library - libnimble.so. This single instance of the library is then linked

with the code for each generated model. In contrast, the default for Windows and Mac OS X is to

compile the library code as a static library - libnimble.a - that is compiled into each model’s and

each algorithm’s own dynamically loadable library (DLL). This does repeat the same code across

models and so occupies more memory. There may be a marginal speed advantage. If one would

like to enable the linkable library in place of the static library (do this only on Mac OS X and other

UNIX variants and not on Windows), one can install the source package with the conguration

argument --enable-dylib set to true. First obtain the NIMBLE source package (which will have

2We’ve tested NIMBLE on Windows 7, 8 and 10.

36 CHAPTER 4. INSTALLING NIMBLE

the extension .tar.gz from our website and then install as follows, replacing VERSION with the

appropriate version number:

RCMD INSTALL --configure-args='--enable-dylib=true' nimble_VERSION.tar.gz

4.4.3 BLAS and LAPACK

NIMBLE also uses BLAS and LAPACK for some of its linear algebra (in particular calculating

density values and generating random samples from multivariate distributions). NIMBLE will use

the same BLAS and LAPACK installed on your system that R uses. Note that a fast (and where

appropriate, threaded) BLAS can greatly increase the speed of linear algebra calculations. See

Section A.3.1 of the R Installation and Administration manual available on CRAN for more details

on providing a fast BLAS for your R installation.

4.4.4 Customizing compilation of the NIMBLE-generated C++

For each model or nimbleFunction, NIMBLE can generate and compile C++. To compile generated

C++, NIMBLE makes system calls starting with R CMD SHLIB and therefore uses the regular R

conguration in ${R_HOME}/etc/${R_ARCH}/Makeconf. NIMBLE places a Makevars le in the

directory in which the code is generated, and R CMD SHLIB uses this le as usual.

In all but specialized cases, the general compilation mechanism will suce. However, one can

customize this. One can specify the location of an alternative Makevars (or Makevars.win) le

to use. Such an alternative le should dene the variables PKG_CPPFLAGS and PKG_LIBS. These

should contain, respectively, the pre-processor ag to locate the NIMBLE include directory, and

the necessary libraries to link against (and their location as necessary), e.g., Rlapack and Rblas on

Windows, and libnimble. Advanced users can also change their default compilers by editing the

Makevars le, see Section 1.2.1 of the Writing R Extensions manual available on CRAN.

Use of this le allows users to specify additional compilation and linking ags. See the Writing R

Extensions manual for more details of how this can be used and what it can contain.

Part II

Models in NIMBLE

37

Chapter 5

Writing models in NIMBLE’s dialect

of BUGS

Models in NIMBLE are written using a variation on the BUGS language. From BUGS code,

NIMBLE creates a model object. This chapter describes NIMBLE’s version of BUGS. The next

chapter explains how to build and manipulate model objects.

5.1 Comparison to BUGS dialects supported by WinBUGS,

OpenBUGS and JAGS

Many users will come to NIMBLE with some familiarity with WinBUGS, OpenBUGS, or JAGS, so

we start by summarizing how NIMBLE is similar to and dierent from those before documenting

NIMBLE’s version of BUGS more completely. In general, NIMBLE aims to be compatible with the

original BUGS language and also JAGS’ version. However, at this point, there are some features

not supported by NIMBLE, and there are some extensions that are planned but not implemented.

5.1.1 Supported features of BUGS and JAGS

1. Stochastic and deterministic1node declarations.

2. Most univariate and multivariate distributions.

3. Link functions.

4. Most mathematical functions.

5. ‘for’ loops for iterative declarations.

6. Arrays of nodes up to 4 dimensions.

7. Truncation and censoring as in JAGS using the T() notation and dinterval.

5.1.2 NIMBLE’s Extensions to BUGS and JAGS

NIMBLE extends the BUGS language in the following ways:

1NIMBLE calls non-stochastic nodes ‘deterministic’, whereas BUGS calls them ‘logical’. NIMBLE uses ‘logical’ in

the way R does, to refer to boolean (TRUE/FALSE) variables.

39

40 CHAPTER 5. WRITING MODELS IN NIMBLE’S DIALECT OF BUGS

1. User-dened functions and distributions – written as nimbleFunctions – can be used in model

code. See Chapter 12.

2. Multiple parameterizations for distributions, similar to those in R, can be used.

3. Named parameters for distributions and functions, similar to R function calls, can be used.

4. Linear algebra, including for vectorized calculations of simple algebra, can be used in deter-

ministic declarations.

5. Distribution parameters can be expressions, as in JAGS but not in WinBUGS. Caveat: pa-

rameters to multivariate distributions (e.g., dmnorm) cannot be expressions (but an expression

can be dened in a separate deterministic expression and the resulting variable then used).

6. Alternative models can be dened from the same model code by using if-then-else statements

that are evaluated when the model is dened.

7. More exible indexing of vector nodes within larger variables is allowed. For example one can

place a multivariate normal vector arbitrarily within a higher-dimensional object, not just in

the last index.

8. More general constraints can be declared using dconstraint, which extends the concept of

JAGS’ dinterval.

9. Link functions can be used in stochastic, as well as deterministic, declarations.2

10. Data values can be reset, and which parts of a model are agged as data can be changed,

allowing one model to be used for dierent data sets without rebuilding the model each time.

11. As of Version 0.6-6 we now support stochastic/dynamic indexes. More specically in earlier

versions all indexes needed to be constants. Now indexes can be other nodes or functions

of other nodes. For a given dimension of a node being indexed, if the index is not con-

stant, it must be a scalar value. So expressions such as mu[k[i], 3] or mu[k[i], 1:3] or

mu[k[i], j[i]] are allowed, but not mu[k[i]:(k[i]+1)]. Nested dynamic indexes such as

mu[k[j[i]]] are also allowed.

5.1.3 Not-yet-supported features of BUGS and JAGS

In this release, the following are not supported.

1. The appearance of the same node on the left-hand side of both a <- and a ∼declaration

(used in WinBUGS for data assignment for the value of a stochastic node).

2. Multivariate nodes must appear with brackets, even if they are empty. E.g., xcannot be

multivariate but x[] or x[2:5] can be.

3. NIMBLE generally determines the dimensionality and sizes of variables from the BUGS code.

However, when a variable appears with blank indices, such as in x.sum <- sum(x[]), and

if the dimensions of the variable are not clearly dened in other declarations, NIMBLE cur-

rently requires that the dimensions of x be provided when the model object is created (via

nimbleModel).

5.2 Writing models

Here we introduce NIMBLE’s version of BUGS. The WinBUGS, OpenBUGS and JAGS manuals

are also useful resources for writing BUGS models, including many examples.

2But beware of the possibility of needing to set values for ‘lifted’ nodes created by NIMBLE.

5.2. WRITING MODELS 41

5.2.1 Declaring stochastic and deterministic nodes

BUGS is a declarative language for graphical (or hierarchical) models. Most programming languages

are imperative, which means a series of commands will be executed in the order they are written. A

declarative language like BUGS is more like building a machine before using it. Each line declares

that a component should be plugged into the machine, but it doesn’t matter in what order they

are declared as long as all the right components are plugged in by the end of the code.

The machine in this case is a graphical model3. A node (sometimes called a vertex) holds one value,

which may be a scalar or a vector. Edges dene the relationships between nodes. A huge variety

of statistical models can be thought of as graphs.

Here is the code to dene and create a simple linear regression model with four observations.

library(nimble)

mc <- nimbleCode({

intercept ~ dnorm(0,sd = 1000)

slope ~ dnorm(0,sd = 1000)

sigma ~ dunif(0,100)

for(i in 1:4) {

predicted.y[i] <- intercept + slope * x[i]

y[i] ~ dnorm(predicted.y[i], sd = sigma)

}

})

model <- nimbleModel(mc, data = list(y = rnorm(4)))

library(igraph)

layout <- matrix(ncol = 2,byrow = TRUE,

# These seem to be rescaled to fit in the plot area,

# so I'll just use 0-100 as the scale

data = c(33,100,

66,100,

50,0,# first three are parameters

15,50,35,50,55,50,75,50,# x's

20,75,40,75,60,75,80,75,# predicted.y's

25,25,45,25,65,25,85,25)# y's

)

sizes <- c(45,30,30,

rep(20,4),

rep(50,4),

rep(20,4))

edge.color <- "black"

# c(

3Technically, a directed acyclic graph

42 CHAPTER 5. WRITING MODELS IN NIMBLE’S DIALECT OF BUGS

# rep("green", 8),

# rep("red", 4),

# rep("blue", 4),

# rep("purple", 4))

stoch.color <- "deepskyblue2"

det.color <- "orchid3"

rhs.color <- "gray73"

fill.color <- c(

rep(stoch.color, 3),

rep(rhs.color, 4),

rep(det.color, 4),

rep(stoch.color, 4)

)

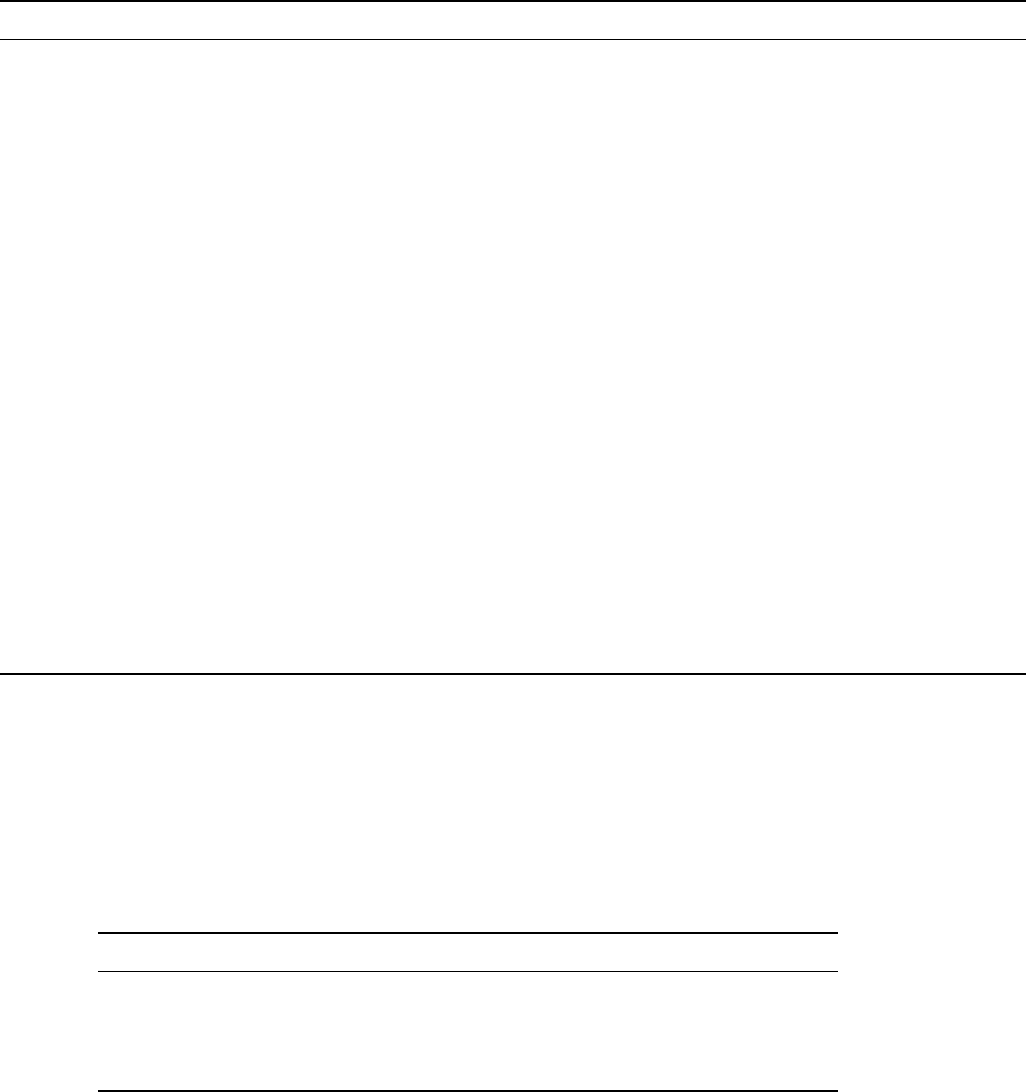

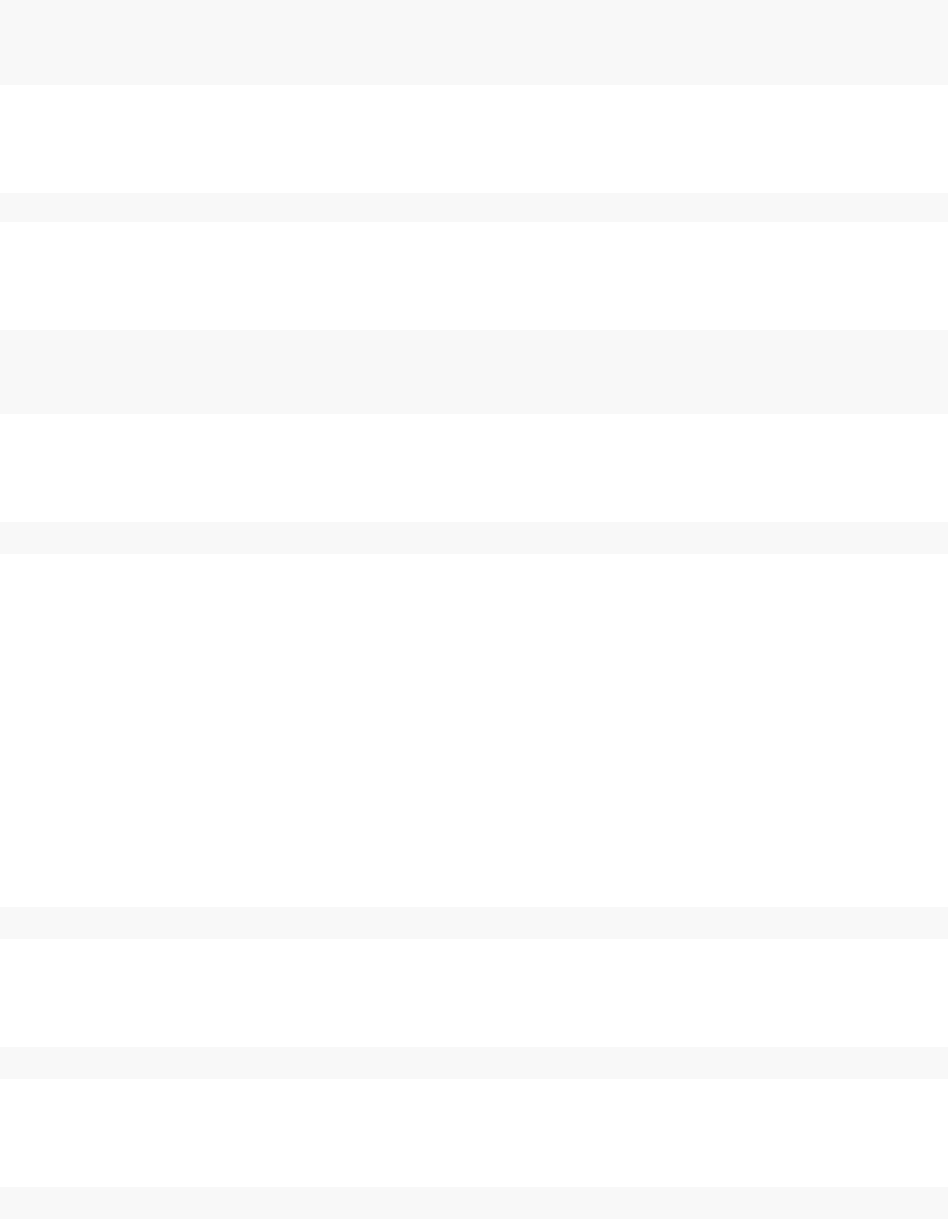

plot(model$graph, vertex.shape = "crectangle",

vertex.size = sizes,

vertex.size2 = 20,

layout = layout,

vertex.label.cex = 3.0,

vertex.color = fill.color,

edge.width = 3,

asp = 0.5,

edge.color = edge.color)

intercept slope

sigma

x[1] x[2] x[3] x[4]

predicted.y[1] predicted.y[2] predicted.y[3] predicted.y[4]

y[1] y[2] y[3] y[4]

Figure 5.1: Graph of a linear regression model

The graph representing the model is shown in Figure 5.1. Each observation, y[i], is a node whose

edges say that it follows a normal distribution depending on a predicted value, predicted.y[i],

5.2. WRITING MODELS 43

and standard deviation, sigma, which are each nodes. Each predicted value is a node whose edges

say how it is calculated from slope,intercept, and one value of an explanatory variable, x[i],

which are each nodes.

This graph is created from the following BUGS code:

{

intercept ~ dnorm(0,sd = 1000)

slope ~ dnorm(0,sd = 1000)

sigma ~ dunif(0,100)

for(i in 1:4) {

predicted.y[i] <- intercept + slope * x[i]

y[i] ~ dnorm(predicted.y[i], sd = sigma)

}

}

In this code, stochastic relationships are declared with ‘∼’ and deterministic relationships are de-

clared with ‘<-’. For example, each y[i] follows a normal distribution with mean predicted.y[i]

and standard deviation sigma. Each predicted.y[i] is the result of intercept + slope * x[i].

The for-loop yields the equivalent of writing four lines of code, each with a dierent value of i.

It does not matter in what order the nodes are declared. Imagine that each line of code draws

part of Figure 5.1, and all that matters is that the everything gets drawn in the end. Available

distributions, default and alternative parameterizations, and functions are listed in Section 5.2.4.

An equivalent graph can be created by this BUGS code:

{

intercept ~ dnorm(0,sd = 1000)

slope ~ dnorm(0,sd = 1000)

sigma ~ dunif(0,100)

for(i in 1:4) {

y[i] ~ dnorm(intercept + slope * x[i], sd = sigma)

}

}

In this case, the predicted.y[i] nodes in Figure 5.1 will be created automatically by NIMBLE

and will have a dierent name, generated by NIMBLE.

5.2.2 More kinds of BUGS declarations

Here are some examples of valid lines of BUGS code. This code does not describe a sensible or

complete model, and it includes some arbitrary indices (e.g. mvx[8:10, i]) to illustrate exibility.

Instead the purpose of each line is to illustrate a feature of NIMBLE’s version of BUGS.

{

# 1. normal distribution with BUGS parameter order

x ~ dnorm(a + b * c, tau)

# 2. normal distribution with a named parameter

44 CHAPTER 5. WRITING MODELS IN NIMBLE’S DIALECT OF BUGS

y ~ dnorm(a + b * c, sd = sigma)

# 3. For-loop and nested indexing

for(i in 1:N) {

for(j in 1:M[i]) {

z[i,j] ~ dexp(r[ blockID[i] ])

}

}

# 4. multivariate distribution with arbitrary indexing

for(i in 1:3)

mvx[8:10, i] ~ dmnorm(mvMean[3:5], cov = mvCov[1:3,1:3, i])

# 5. User-provided distribution

w ~ dMyDistribution(hello = x, world = y)

# 6. Simple deterministic node

d1 <- a + b

# 7. Vector deterministic node with matrix multiplication

d2[] <- A[ , ] %*% mvMean[1:5]

# 8. Deterministic node with user-provided function

d3 <- foo(x, hooray = y)

}

When a variable appears only on the right-hand side, it can be provided via constants (in which

case it can never be changed) or via data or inits, as discussed in Chapter 6.

Notes on the comment-numbered lines are:

1. xfollows a normal distribution with mean a + b*c and precision tau (default BUGS second

parameter for dnorm).

2. yfollows a normal distribution with the same mean as xbut a named standard deviation

parameter instead of a precision parameter (sd = 1/sqrt(precision)).

3. z[i, j] follows an exponential distribution with parameter r[ blockID[i] ]. This shows

how for-loops can be used for indexing of variables containing multiple nodes. Variables that

dene for-loop indices (Nand M) must also be provided as constants.

4. The arbitrary block mvx[8:10, i] follows a multivariate normal distribution, with a named

covariance matrix instead of BUGS’ default of a precision matrix. As in R, curly braces for

for-loop contents are only needed if there is more than one line.

5. wfollows a user-dened distribution. See Chapter 12.

6. d1 is a scalar deterministic node that, when calculated, will be set to a + b.

7. d2 is a vector deterministic node using matrix multiplication in R’s syntax.

8. d3 is a deterministic node using a user-provided function. See Chapter 12.

5.2.2.1 More about indexing

Examples of allowed indexing include:

•x[i] # a single index

•x[i:j] # a range of indices

5.2. WRITING MODELS 45

•x[i:j,k:l] # multiple single indices or ranges for higher-dimensional arrays

•x[i:j, ] # blank indices indicating the full range

•x[3*i+7] # computed indices

•x[(3*i):(5*i+1)] # computed lower and upper ends of an index range

•x[k[i]+1] # a dynamic (and computed) index

•x[k[j[i]]] # nested dynamic indexes

•x[k[i], 1:3] # nested indexing of rows or columns

NIMBLE does not allow multivariate nodes to be used without square brackets, which is an incom-

patibility with JAGS. Therefore a statement like xbar <- mean(x) in JAGS must be converted to

xbar <- mean(x[]) (if xis a vector) or xbar <- mean(x[,]) (if xis a matrix) for NIMBLE4.

Section 6.1.1.5 discusses how to provide NIMBLE with dimensions of xwhen needed.

Generally NIMBLE supports R-like linear algebra expressions and attempts to follow the same

rules as R about dimensions (although in some cases this is not possible). For example, x[1:3]

%*% y[1:3] converts x[1:3] into a row vector and thus computes the inner product, which is

returned as a 1×1matrix (use inprod to get it as a scalar, which it typically easier). Like in R, a

scalar index will result in dropping a dimension unless the argument drop=FALSE is provided. For

example, mymatrix[i, 1:3] will be a vector of length 3, but mymatrix[i, 1:3, drop=FALSE]

will be a 1×3matrix. More about indexing and dimensions is discussed in Section 11.3.2.6.

5.2.3 Vectorized versus scalar declarations

Suppose you need nodes logY[i] that should be the log of the corresponding Y[i], say for ifrom

1 to 10. Conventionally this would be created with a for loop:

{

for(i in 1:10) {

logY[i] <- log(Y[i])

}

}

Since NIMBLE supports R-like algebraic expressions, an alternative in NIMBLE’s dialect of BUGS

is to use a vectorized declaration like this:

{

logY[1:10] <- log(Y[1:10])

}

There is an important dierence between the models that are created by the above two methods.

The rst creates 10 scalar nodes, logY[1] , . . . , logY[10]. The second creates one vector node,

logY[1:10]. If each logY[i] is used separately by an algorithm, it may be more ecient compu-

tationally if they are declared as scalars. If they are all used together, it will often make sense to

declare them as a vector.

4In nimbleFunctions, as explained in later chapters, square brackets with blank indices are not necessary for

multivariate objects.

46 CHAPTER 5. WRITING MODELS IN NIMBLE’S DIALECT OF BUGS

5.2.4 Available distributions

5.2.4.1 Distributions

NIMBLE supports most of the distributions allowed in BUGS and JAGS. Table 5.1 lists the distri-

butions that are currently supported, with their default parameterizations, which match those of

BUGS5. NIMBLE also allows one to use alternative parameterizations for a variety of distributions

as described next. See Section 12.2 to learn how to write new distributions using nimbleFunctions.

Table 5.1: Distributions with their default order of parame-

ters. The value of the random variable is denoted by x.

Name Usage Density Lower Upper

Bernoulli dbern(prob = p) px(1 −p)1−x0 1

0< p < 1

Beta dbeta(shape1 = a, xa−1(1−x)b−1

β(a,b)0 1

shape2 = b),a > 0,b > 0

Binomial dbin(prob = p, size = n) n

xpx(1 −p)n−x0n

0< p < 1,n∈N∗

CAR dcar_normal(adj, weights, see chapter 9for details

(intrinsic) num, tau, c, zero_mean

CAR dcar_proper(mu, C, adj, see chapter 9for details

(proper) num, M, tau, gamma)

Categorical dcat(prob = p) px

ipi1N

p∈(R+)N

Chi-square dchisq(df = k),k > 0xk

2−1exp(−x/2)

2k

2Γ( k

2)0

Double ddexp(location = µ, rate = τ

2exp(−τ|x−µ|)

exponential τ),τ > 0

Dirichlet ddirch(alpha = α)Γ(jαj)j

xαj−1

j

Γ(αj)0

αj≥0

Exponential dexp(rate = λ),λ > 0λexp(−λx) 0

Flat dflat() ∝1(improper)

Gamma dgamma(shape = r, rate = λ)λrxr−1exp(−λx)

Γ(r)0

λ > 0,r > 0

Half at dhalfflat() ∝1(improper) 0

Inverse dinvgamma(shape = r, scale = λ)λrx−(r+1) exp(−λ/x)

Γ(r)0

gamma λ > 0,r > 0

Logistic dlogis(location = µ,τexp{(x−µ)τ}

[1+exp{(x−µ)τ}]2

rate = τ),τ > 0

Log-normal dlnorm(meanlog = µ,τ

2π1

2x−1exp −τ(log(x)−µ)2/20

taulog = τ), τ > 0