Num Py Beginner's Guide(3rd)

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 348 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover

- Copyright

- Credits

- About the Author

- About the Reviewers

- www.PacktPub.com

- Table of Contents

- Preface

- 1: NumPy Quick Start

- Python

- Time for action – installing Python on different operating systems

- The Python help system

- Time for action – using the Python help system

- Basic arithmetic and variable assignment

- Time for action – using Python as a calculator

- Time for action – assigning values to variables

- The print() function

- Time for action – printing with the print() function

- Code comments

- Time for action – commenting code

- The if statement

- Time for action – deciding with the if statement

- The for loop

- Time for action – repeating instructions with loops

- Python functions

- Time for action – defining functions

- Python modules

- Time for action – importing modules

- NumPy on Windows

- Time for action – installing NumPy, matplotlib, SciPy, and IPython on Windows

- NumPy on Linux

- Time for action – installing NumPy, matplotlib, SciPy, and IPython on Linux

- NumPy on Mac OS X

- Time for action – installing NumPy, SciPy, matplotlib, and IPython with MacPorts or Fink

- Building from source

- Arrays

- Time for action – adding vectors

- IPython – an interactive shell

- Online resources and help

- Summary

- 2: Beginning with NumPy Fundamentals

- NumPy array object

- Time for action – creating a multidimensional array

- Time for action – creating a record data type

- One-dimensional slicing and indexing

- Time for action – slicing and indexing multidimensional arrays

- Time for action – manipulating array shapes

- Time for action – stacking arrays

- Time for action – splitting arrays

- Time for action – converting arrays

- Summary

- 3: Getting Familiar with Commonly Used Functions

- File I/O

- Time for action – reading and writing files

- Comma Separated Values files

- Time for action – loading from CSV files

- Volume Weighted Average Price

- Time for action – calculating volume weighted average price

- Value range

- Time for action – finding highest and lowest values

- Statistics

- Time for action – doing simple statistics

- Stock returns

- Time for action – analyzing stock returns

- Dates

- Time for action – dealing with dates

- Time for action – using the datetime64 data type

- Weekly summary

- Time for action – summarizing data

- Average True Range

- Time for action – calculating the average true range

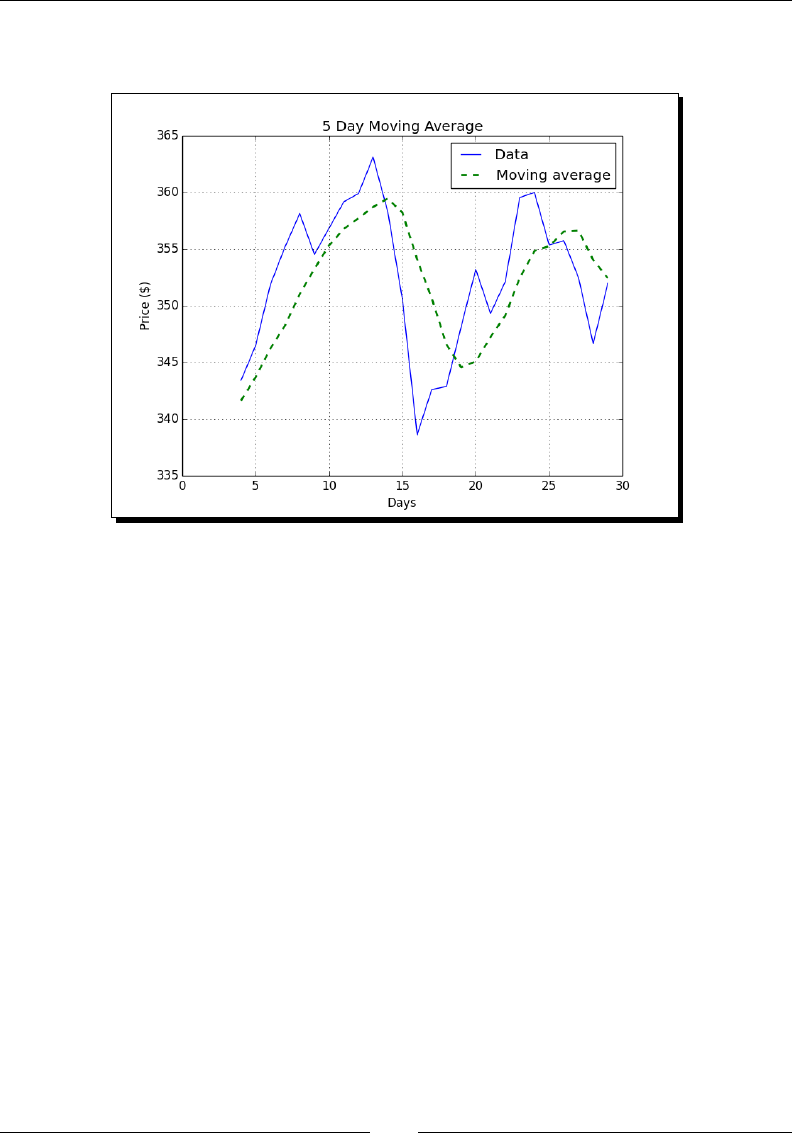

- Simple Moving Average

- Time for action – computing the simple moving average

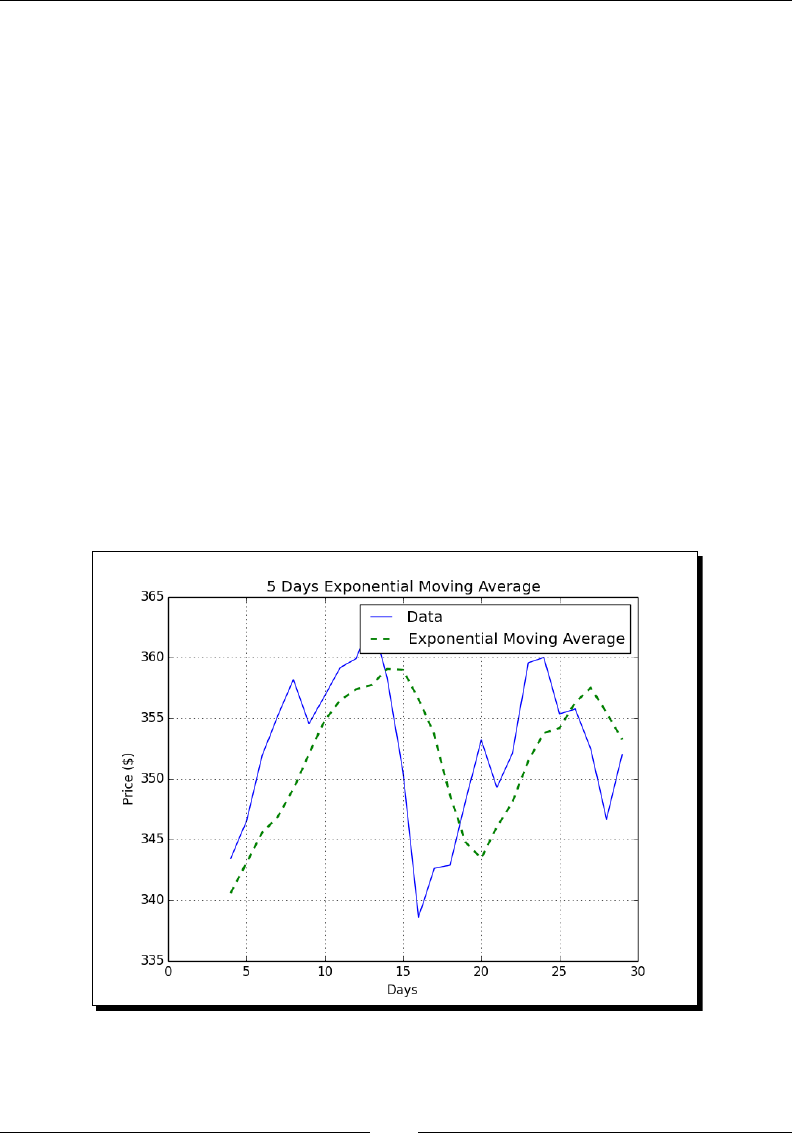

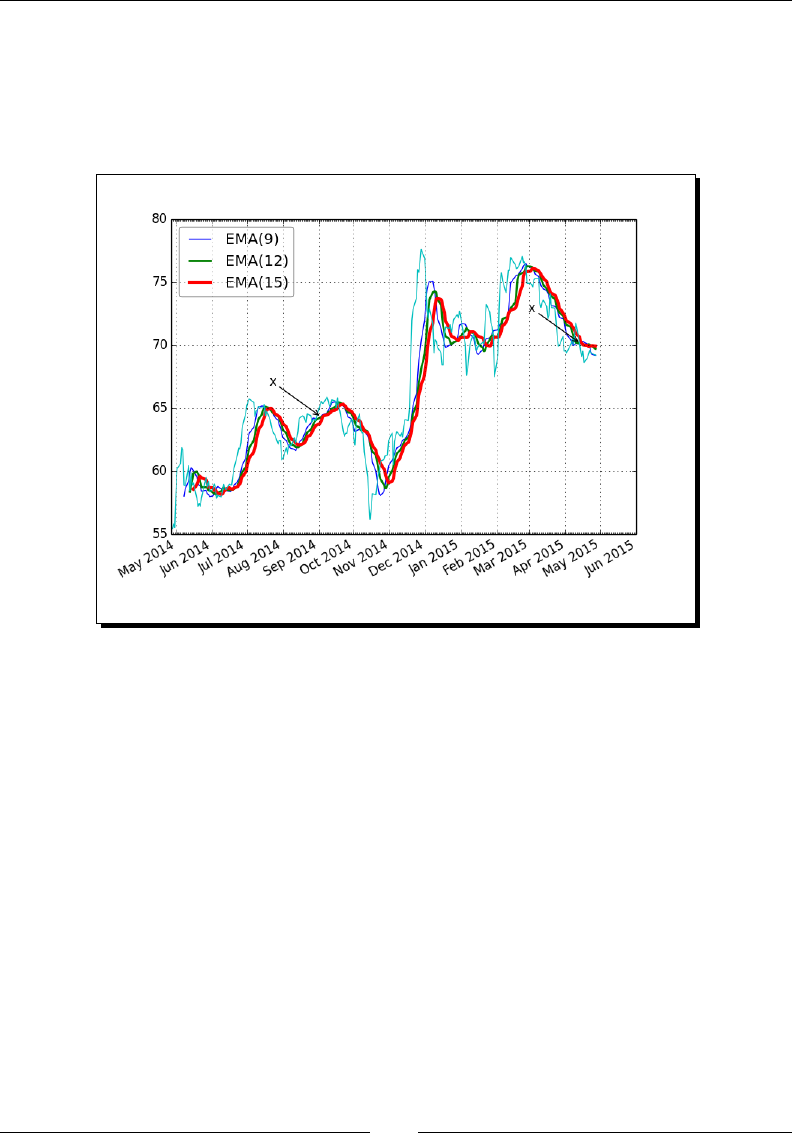

- Exponential Moving Average

- Time for action – calculating the exponential moving average

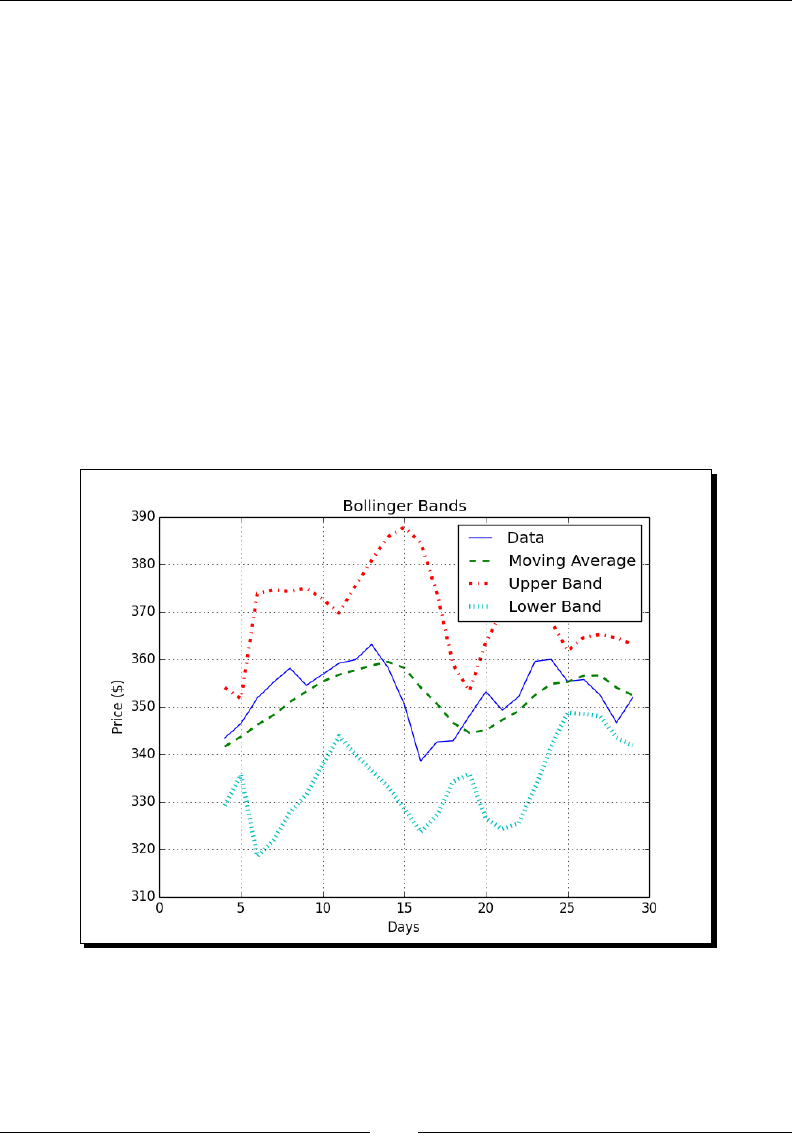

- Bollinger Bands

- Time for action – enveloping with Bollinger bands

- Linear model

- Time for action – predicting price with a linear model

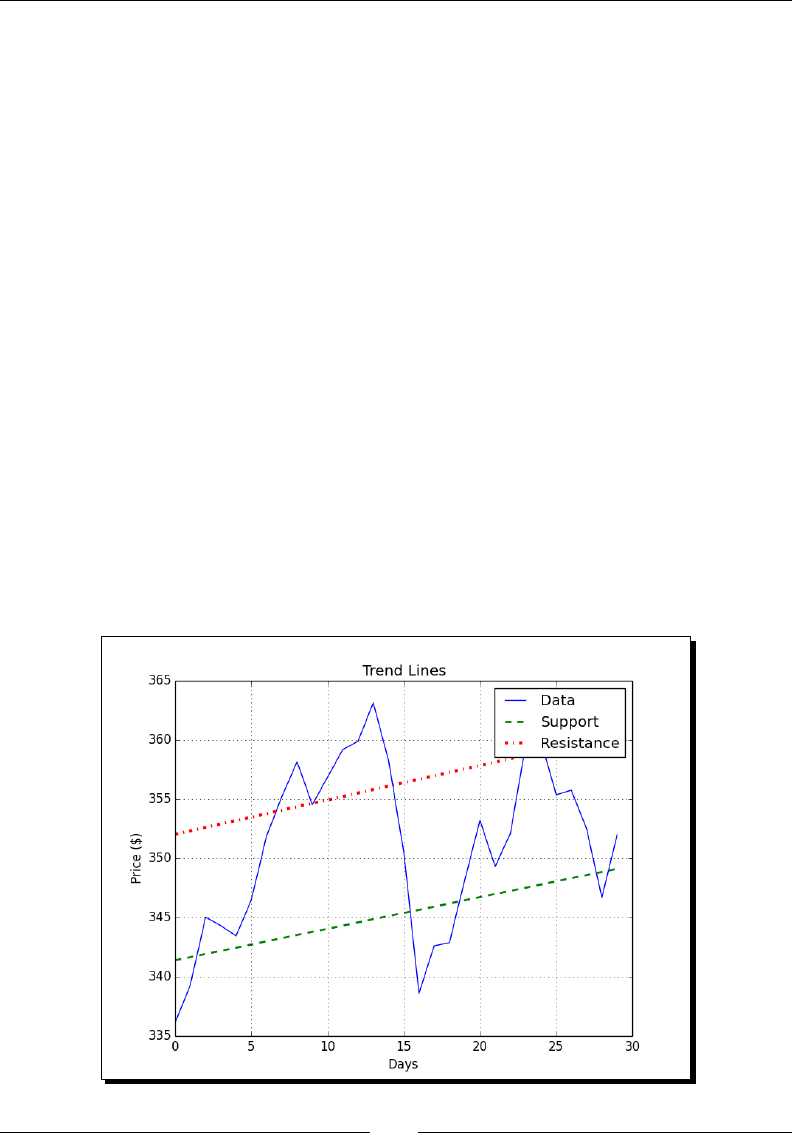

- Trend lines

- Time for action – drawing trend lines

- Methods of ndarray

- Time for action – clipping and compressing arrays

- Factorial

- Time for action – calculating the factorial

- Missing values and Jackknife resampling

- Time for action – handling NaNs with the nanmean(), nanvar(), and nanstd() functions

- Summary

- 4: Convenience Functions for Your Convenience

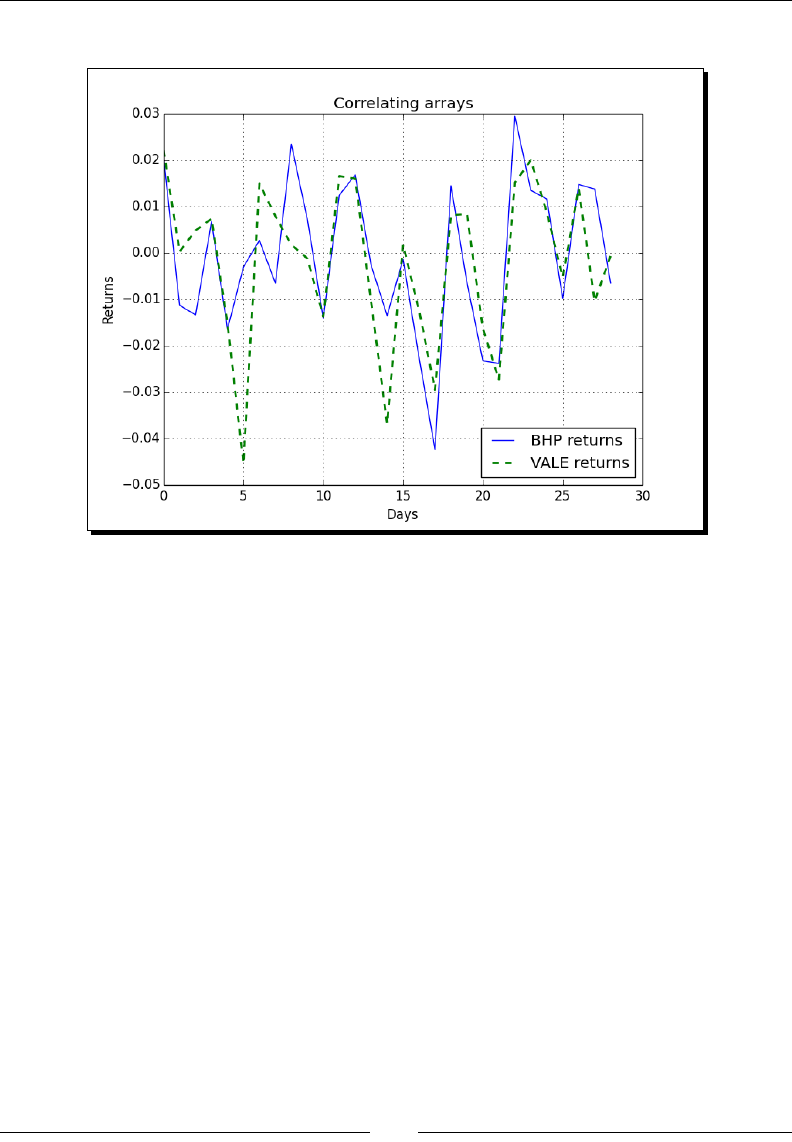

- Correlation

- Time for action – trading correlated pairs

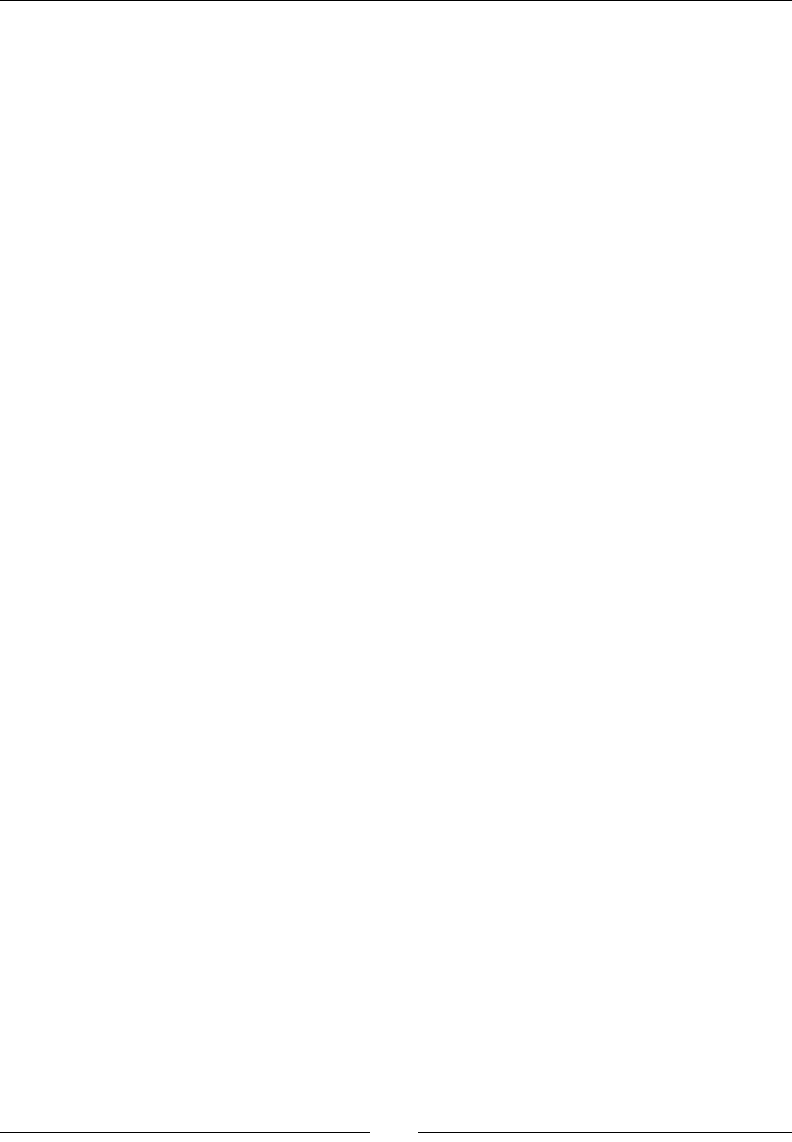

- Polynomials

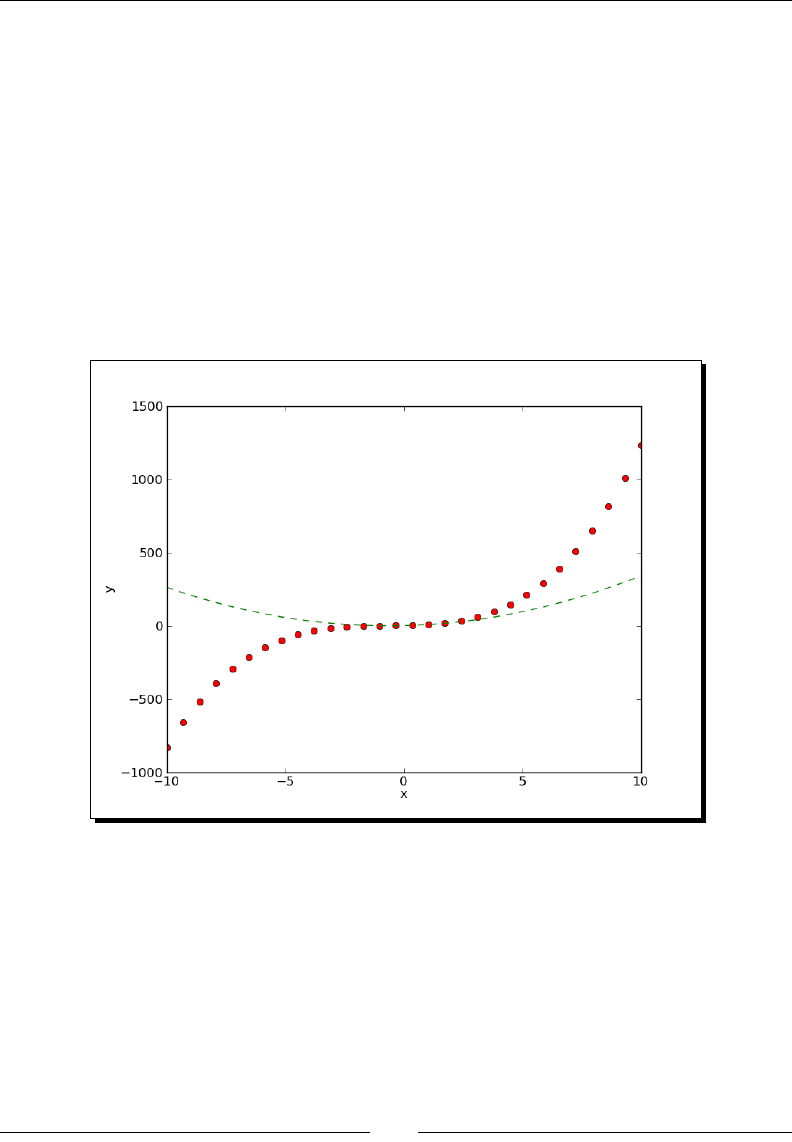

- Time for action – fitting to polynomials

- On-balance Volume

- Time for action – balancing volume

- Simulation

- Time for action – avoiding loops with vectorize()

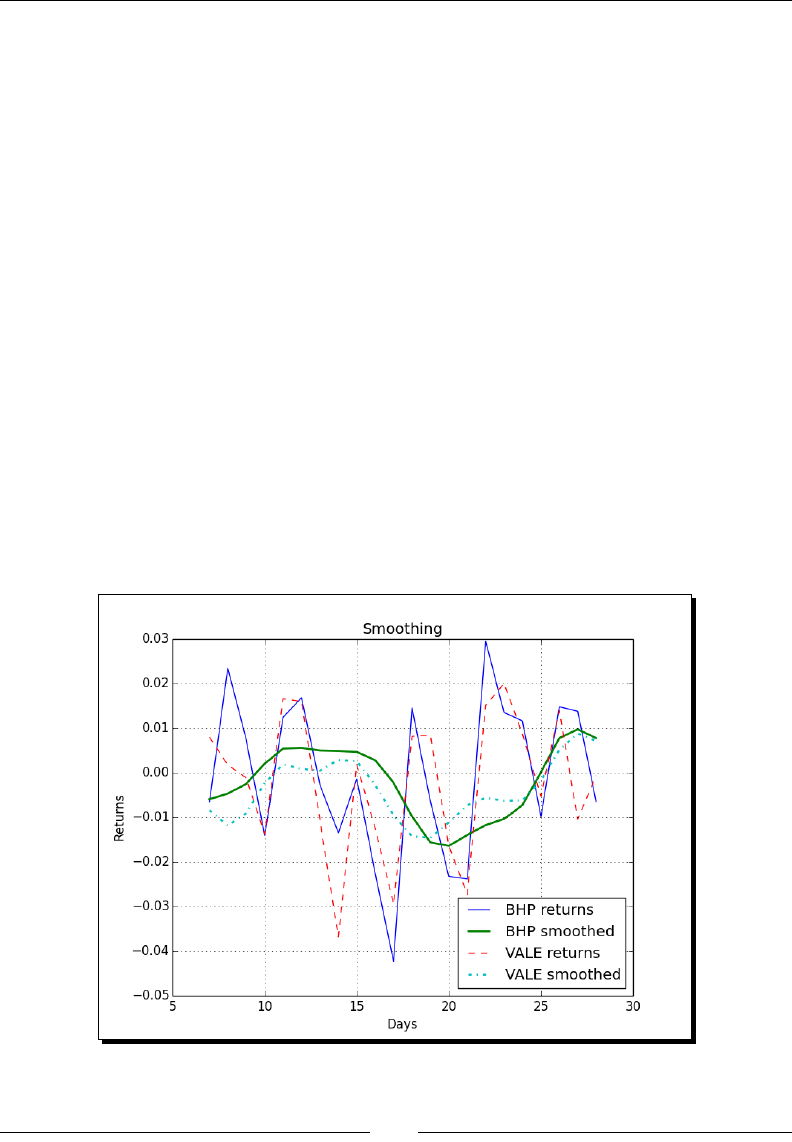

- Smoothing

- Time for action – smoothing with the hanning() function

- Initialization

- Time for action – creating value initialized arrays with the full() and full_like() functions

- Summary

- 5: Working with Matrices and ufuncs

- Matrices

- Time for action – creating matrices

- Creating a matrix from other matrices

- Time for action – creating a matrix from other matrices

- Universal functions

- Time for action – creating universal functions

- Universal function methods

- Time for action – applying the ufunc methods on the add function

- Arithmetic functions

- Time for action – dividing arrays

- Modulo operation

- Time for action – computing the modulo

- Fibonacci numbers

- Time for action – computing Fibonacci numbers

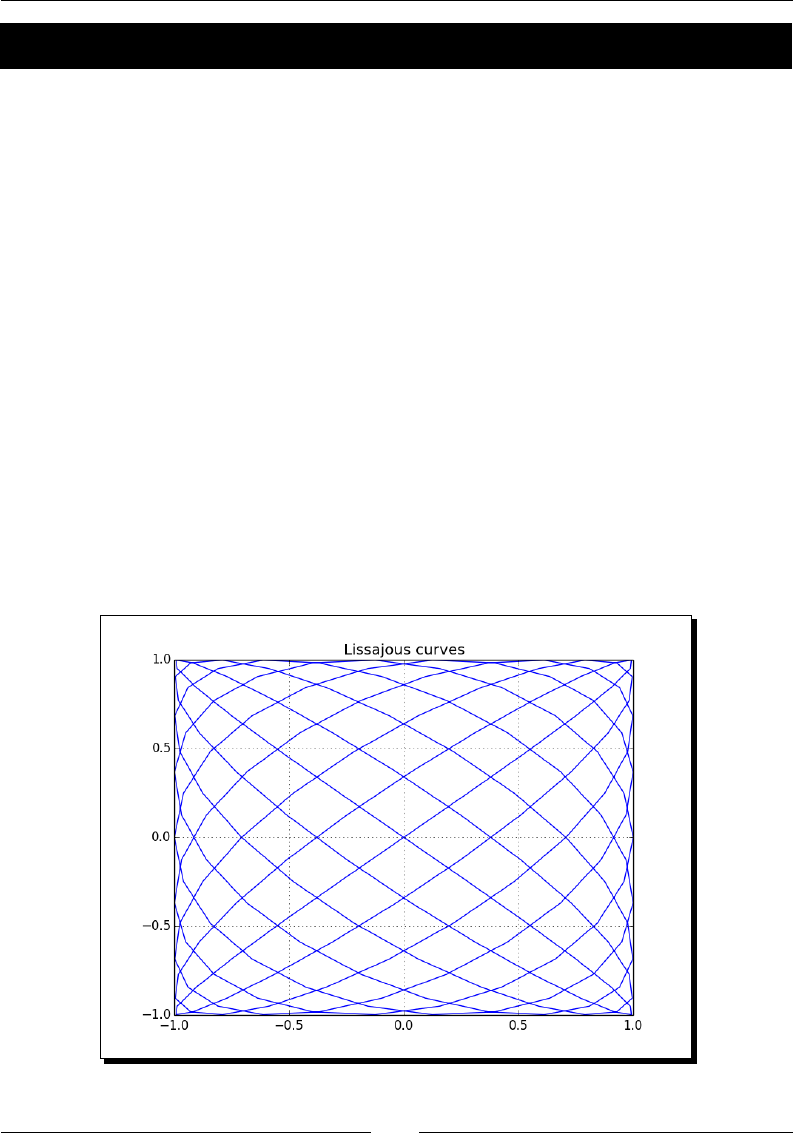

- Lissajous curves

- Time for action – drawing Lissajous curves

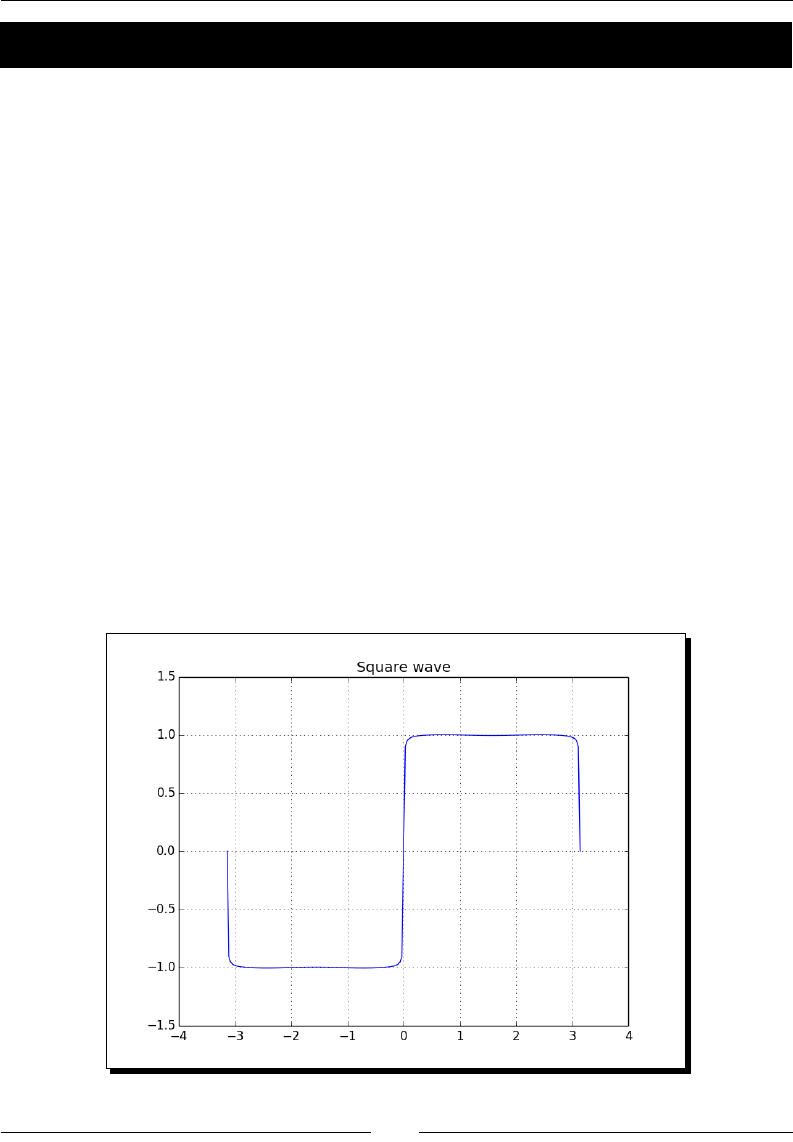

- Square waves

- Time for action – drawing a square wave

- Sawtooth and triangle waves

- Time for action – drawing sawtooth and triangle waves

- Bitwise and comparison functions

- Time for action – twiddling bits

- Fancy indexing

- Time for action – fancy indexing in-place for ufuncs with the at() method

- Summary

- 6: Moving Further with NumPy Modules

- Linear algebra

- Time for action – inverting matrices

- Solving linear systems

- Time for action – solving a linear system

- Finding eigenvalues and eigenvectors

- Time for action – determining eigenvalues and eigenvectors

- Singular value decomposition

- Time for action – decomposing a matrix

- Pseudo inverse

- Time for action – computing the pseudo inverse of a matrix

- Determinants

- Time for action – calculating the determinant of a matrix

- Fast Fourier transform

- Time for action – calculating the Fourier transform

- Shifting

- Time for action – shifting frequencies

- Random numbers

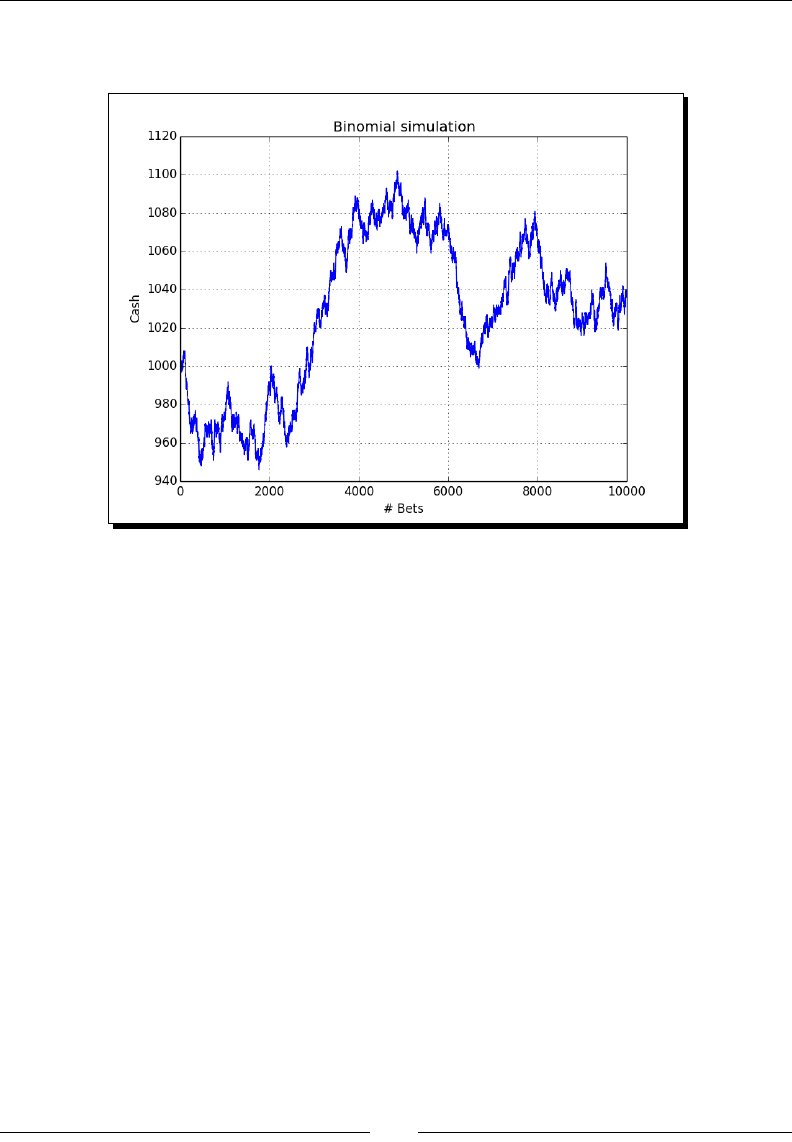

- Time for action – gambling with the binomial

- Hypergeometric distribution

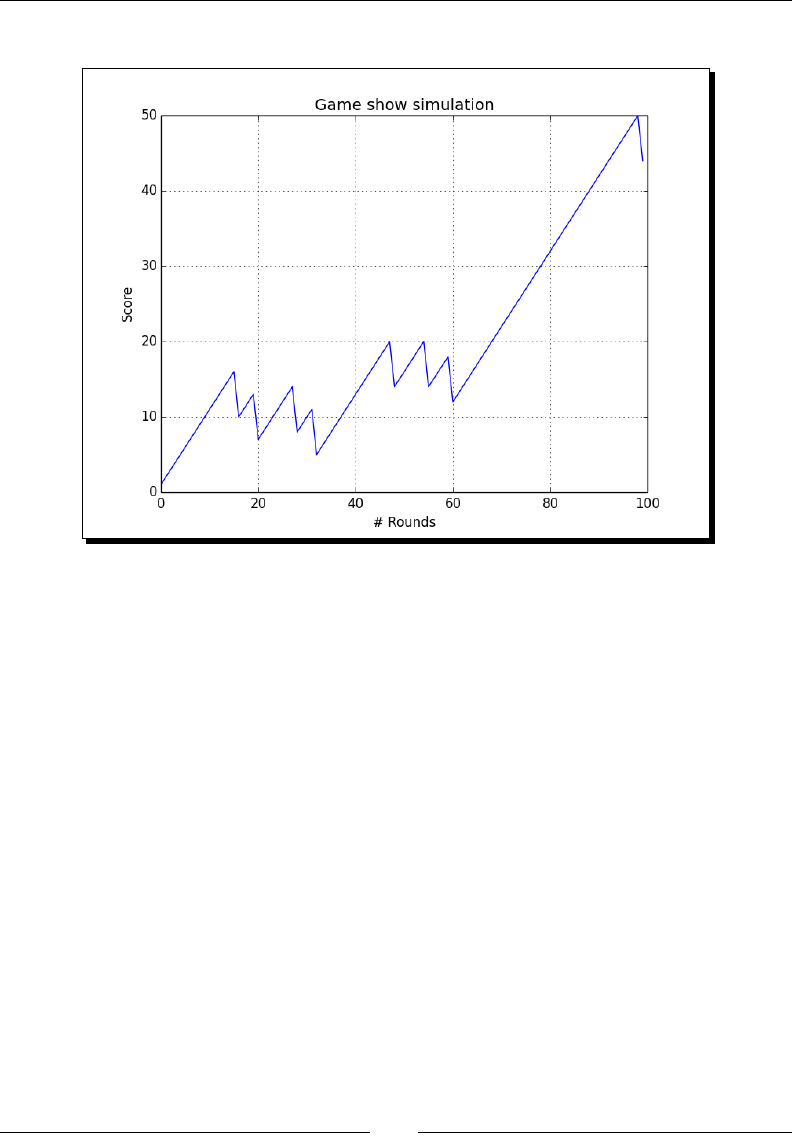

- Time for action – simulating a game show

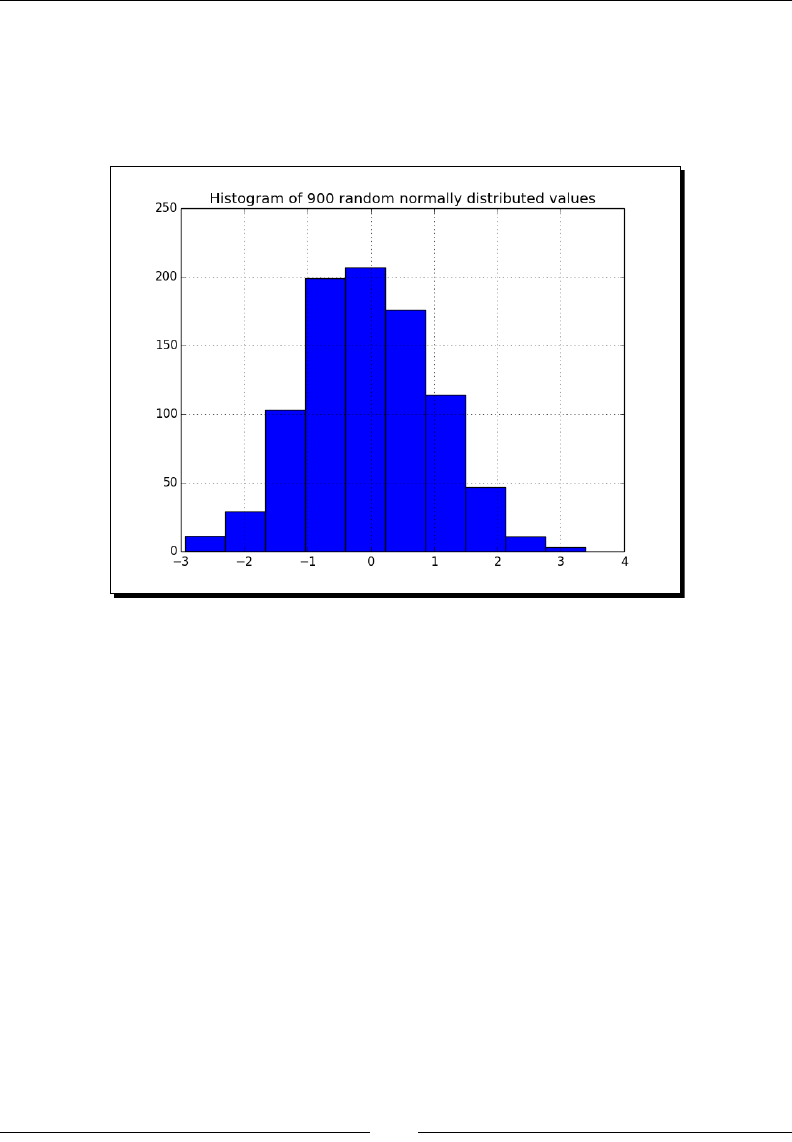

- Continuous distributions

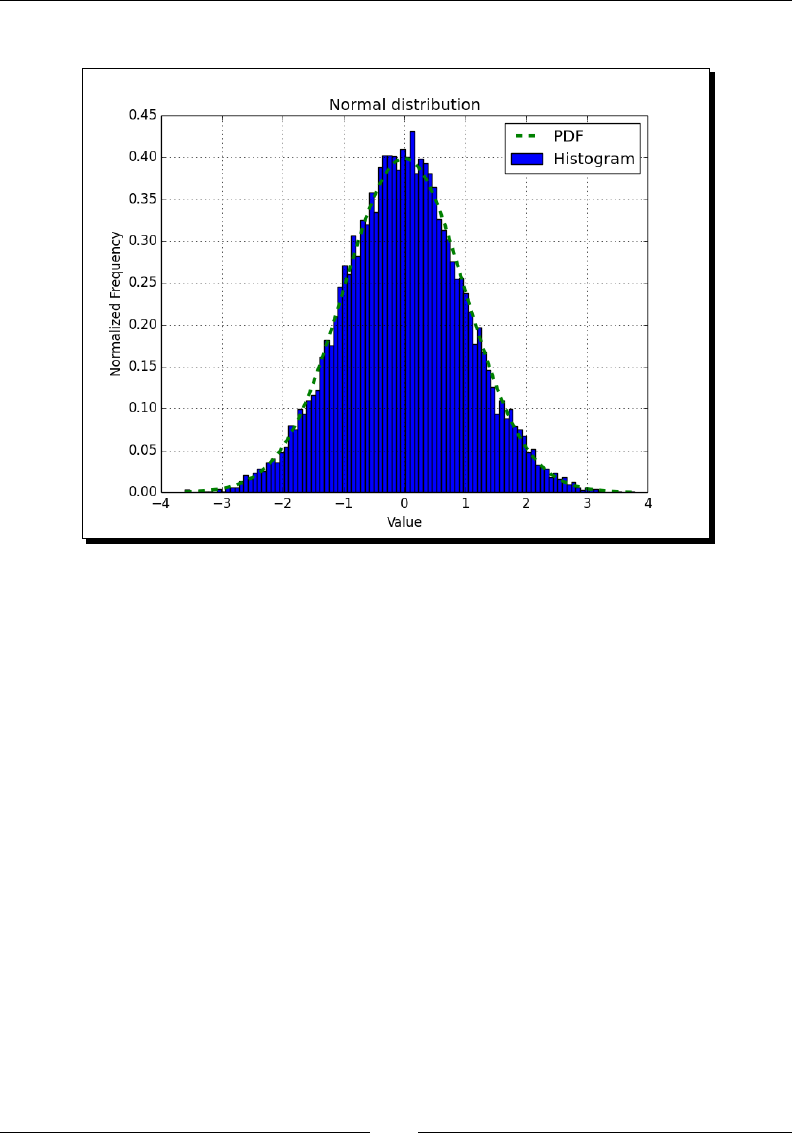

- Time for action – drawing a normal distribution

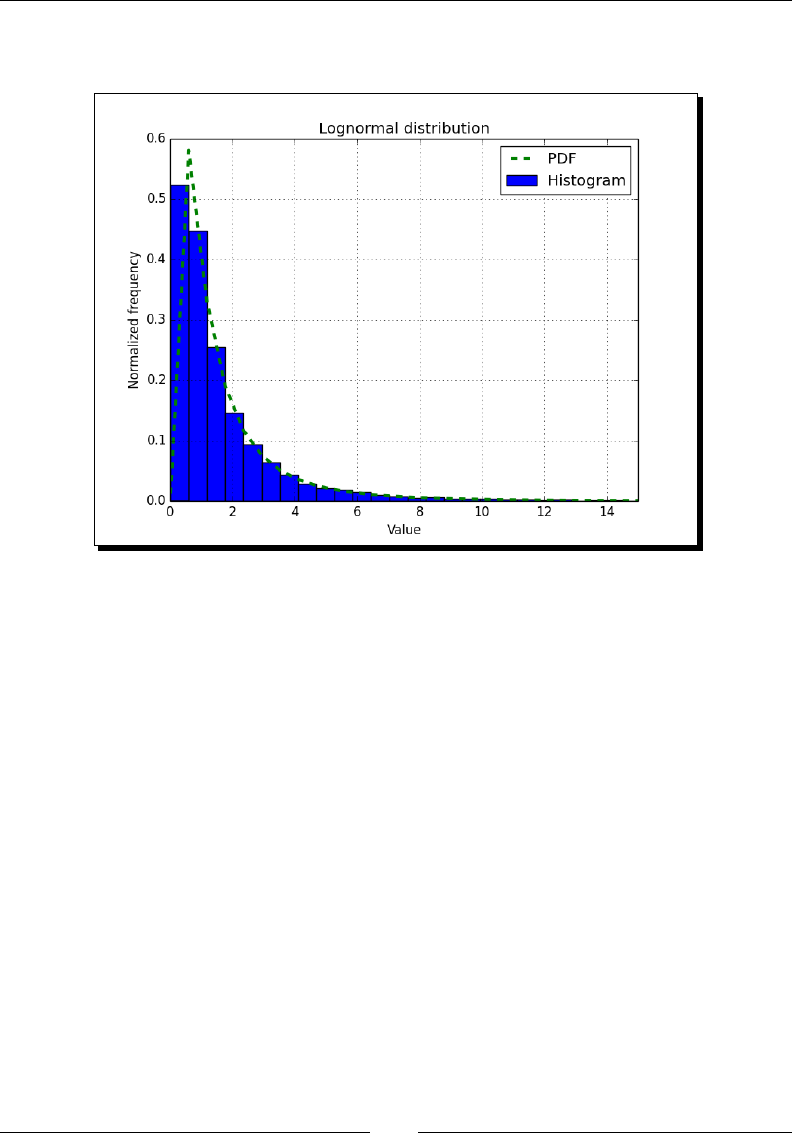

- Lognormal distribution

- Time for action – drawing the lognormal distribution

- Bootstrapping in statistics

- Time for action – sampling with numpy.random.choice()

- Summary

- 7: Peeking Into Special Routines

- Sorting

- Time for action – sorting lexically

- Time for action – partial sorting via selection for a fast median with the partition() function

- Complex numbers

- Time for action – sorting complex numbers

- Searching

- Time for action – using searchsorted

- Array elements extraction

- Time for action – extracting elements from an array

- Financial functions

- Time for action – determining future value

- Present value

- Time for action – getting the present value

- Net present value

- Time for action – calculating the net present value

- Internal rate of return

- Time for action – determining the internal rate of return

- Periodic payments

- Time for action – calculating the periodic payments

- Number of payments

- Time for action – determining the number of periodic payments

- Interest rate

- Time for action – figuring out the rate

- Window functions

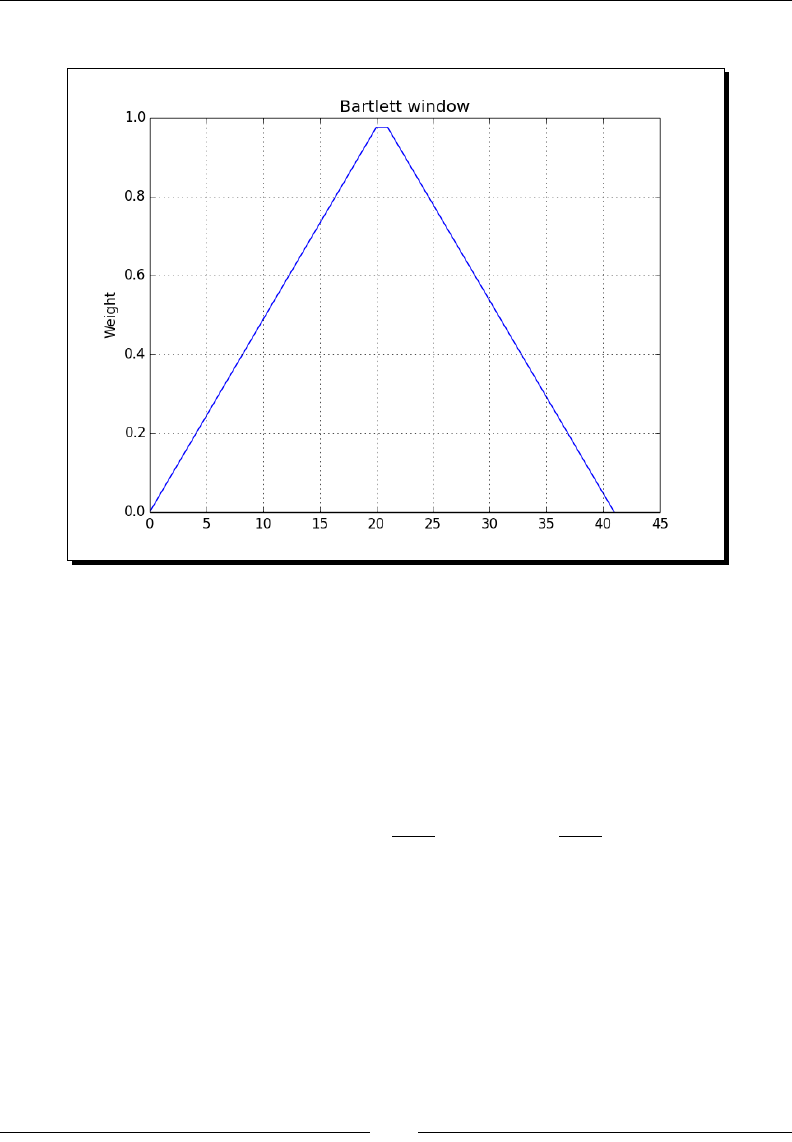

- Time for action – plotting the Bartlett window

- Blackman window

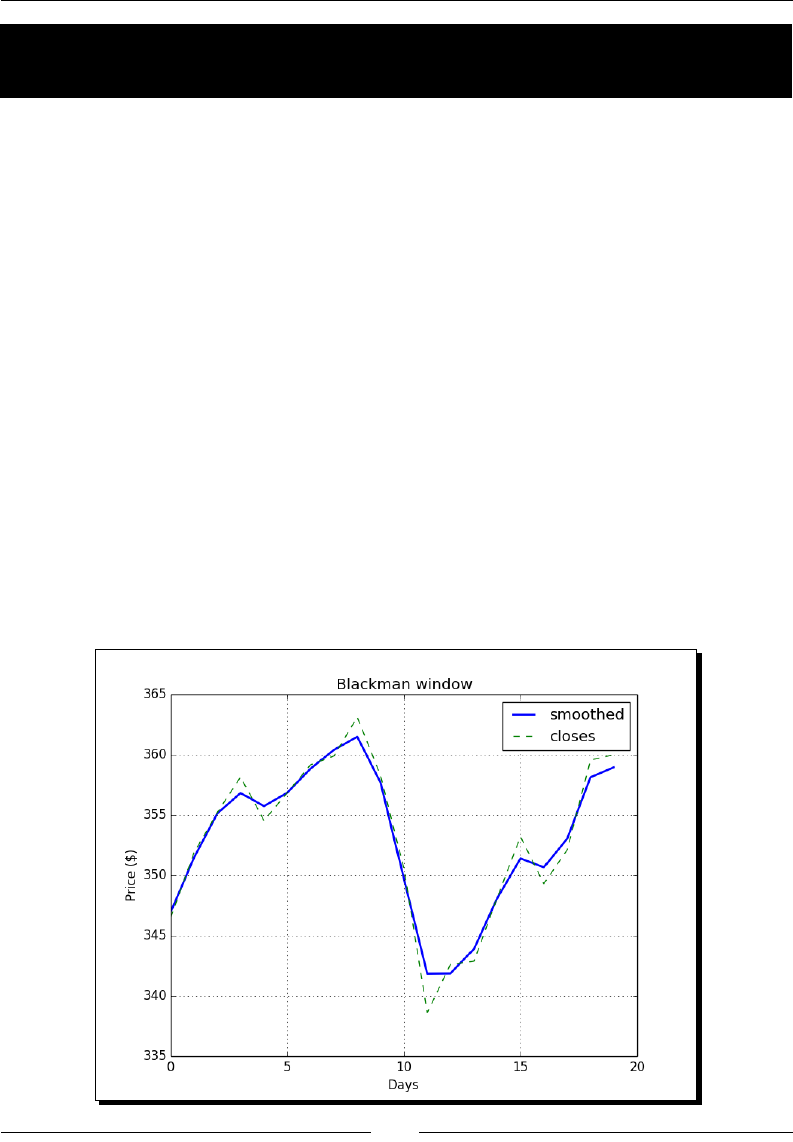

- Time for action – smoothing stock prices with the Blackman window

- Hamming window

- Time for action – plotting the Hamming window

- Kaiser window

- Time for action – plotting the Kaiser window

- Special mathematical functions

- Time for action – plotting the modified Bessel function

- Sinc

- Time for action – plotting the sinc function

- Summary

- 8: Assure Quality with Testing

- Assert functions

- Time for action – asserting almost equal

- Approximately equal arrays

- Time for action – asserting approximately equal

- Almost equal arrays

- Time for action – asserting arrays almost equal

- Equal arrays

- Time for action – comparing arrays

- Ordering arrays

- Time for action – checking the array order

- Objects comparison

- Time for action – comparing objects

- String comparison

- Time for action – comparing strings

- Floating-point comparisons

- Time for action – comparing with assert_array_almost_equal_nulp

- Comparison of floats with more ULPs

- Time for action – comparing using maxulp of 2

- Unit tests

- Time for action – writing a unit test

- Nose tests decorators

- Time for action – decorating tests

- Docstrings

- Time for action – executing doctests

- Summary

- 9: Plotting with matplotlib

- Simple plots

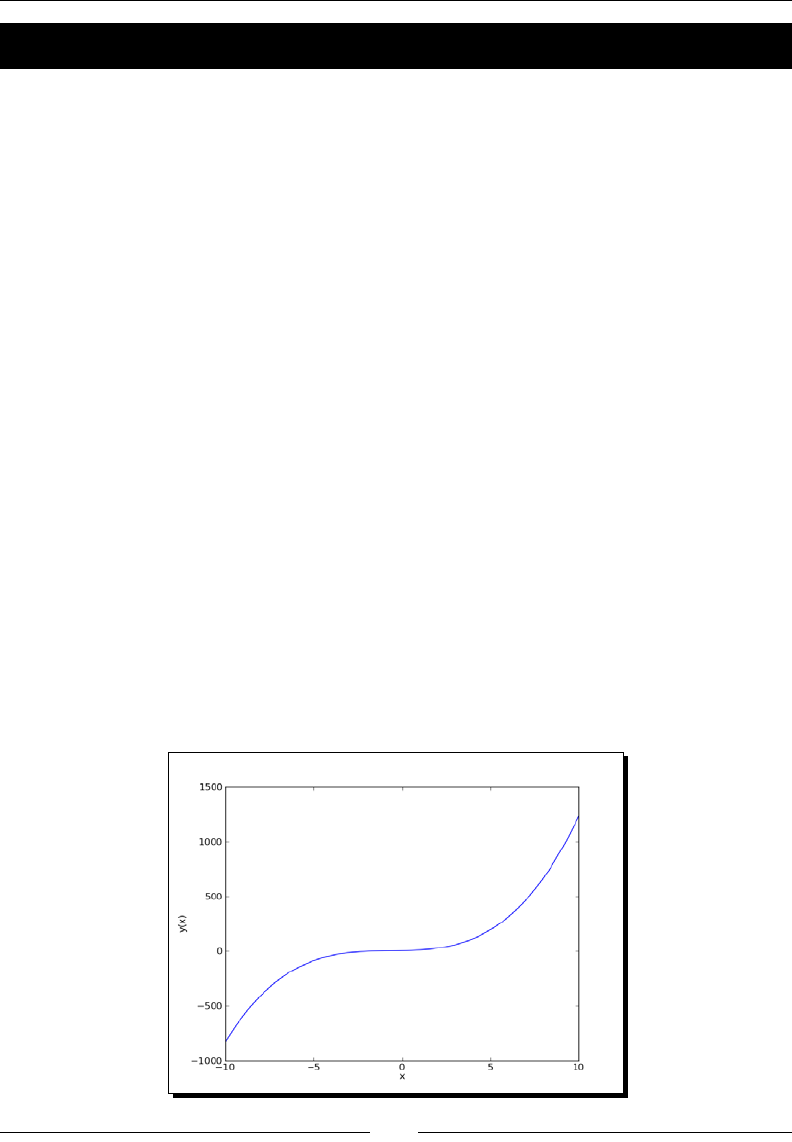

- Time for action – plotting a polynomial function

- Plot format string

- Time for action – plotting a polynomial and its derivative

- Subplots

- Time for action – plotting a polynomial and its derivatives

- Finance

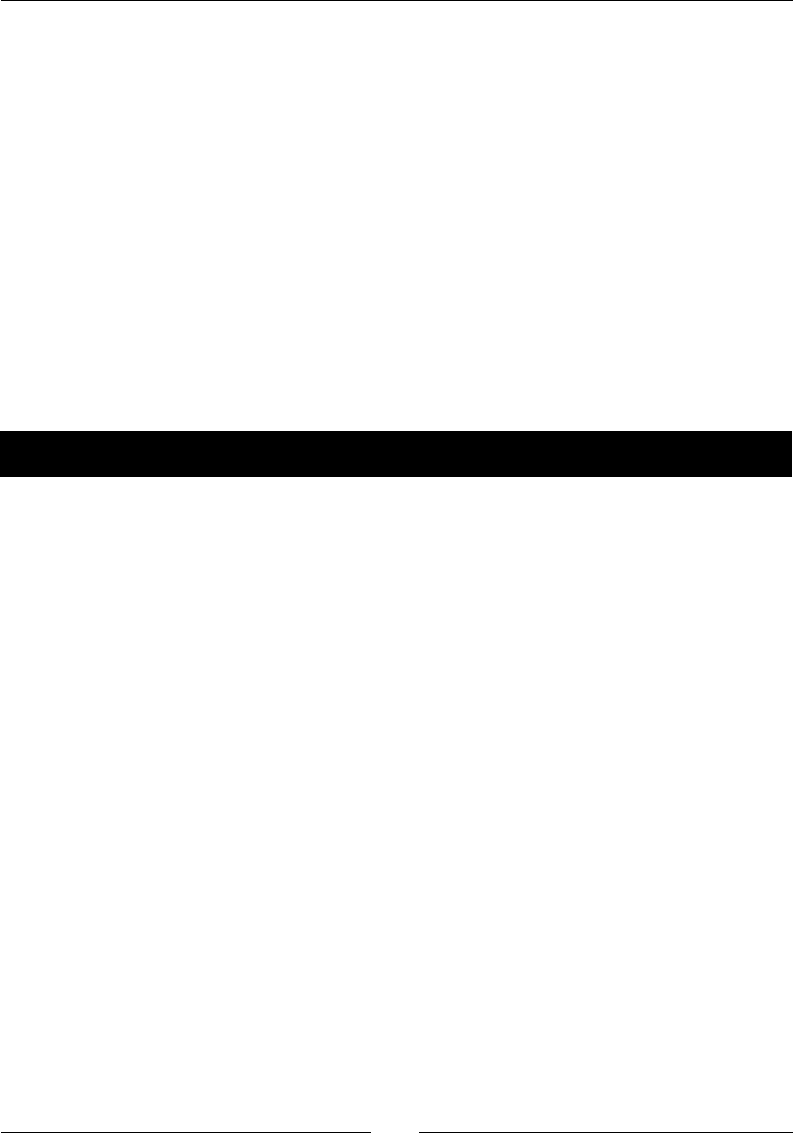

- Time for action – plotting a year's worth of stock quotes

- Histograms

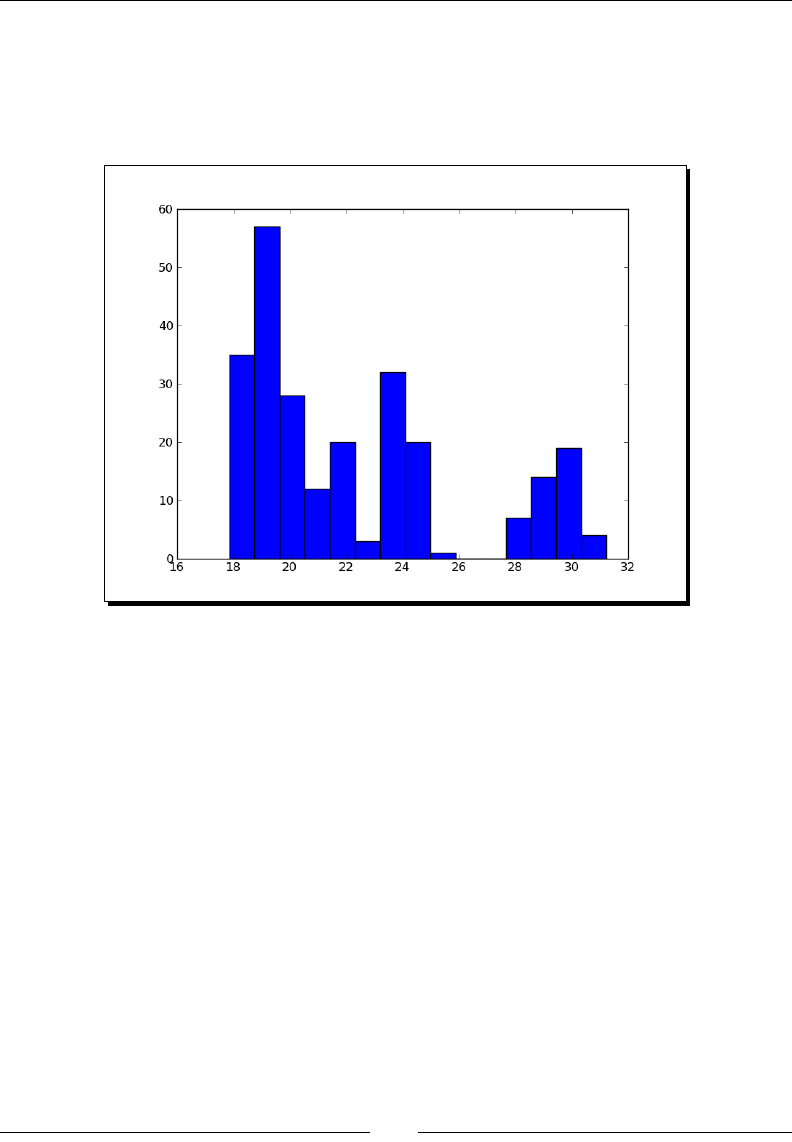

- Time for action – charting stock price distributions

- Logarithmic plots

- Time for action – plotting stock volume

- Scatter plots

- Time for action – plotting price and volume returns with a scatter plot

- Fill between

- Time for action – shading plot regions based on a condition

- Legend and annotations

- Time for action – using a legend and annotations

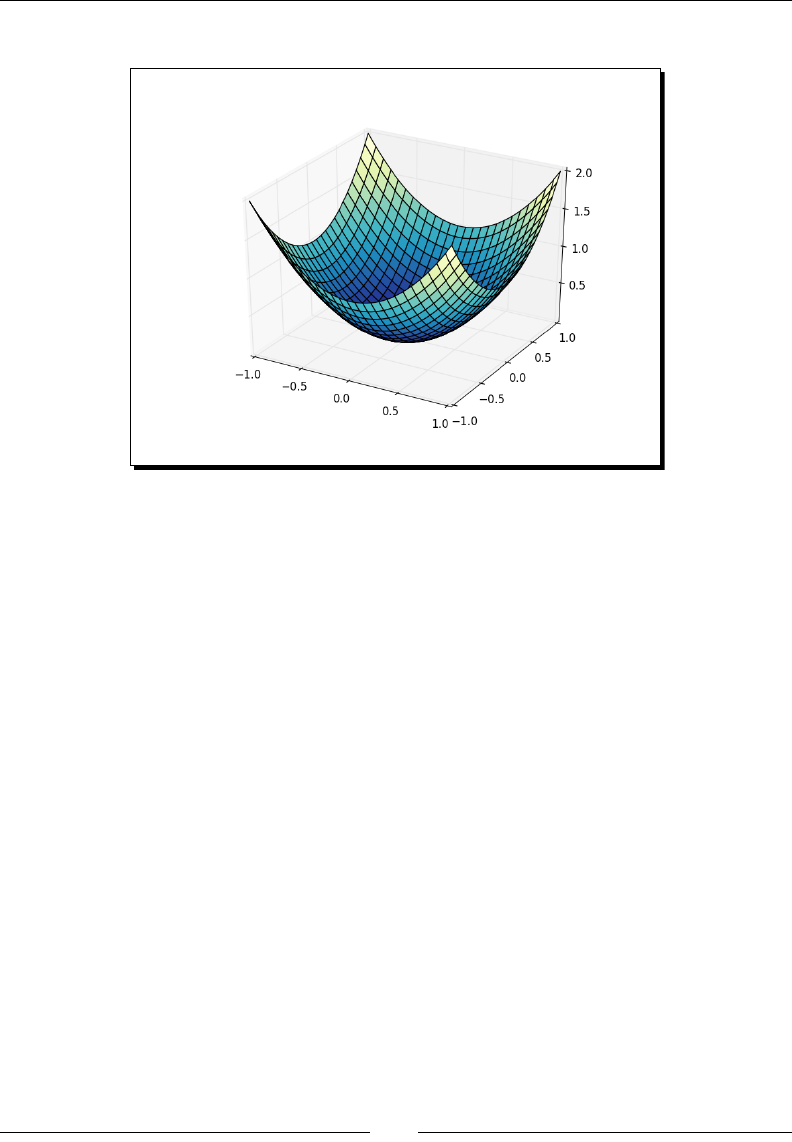

- Three-dimensional plots

- Time for action – plotting in three dimension

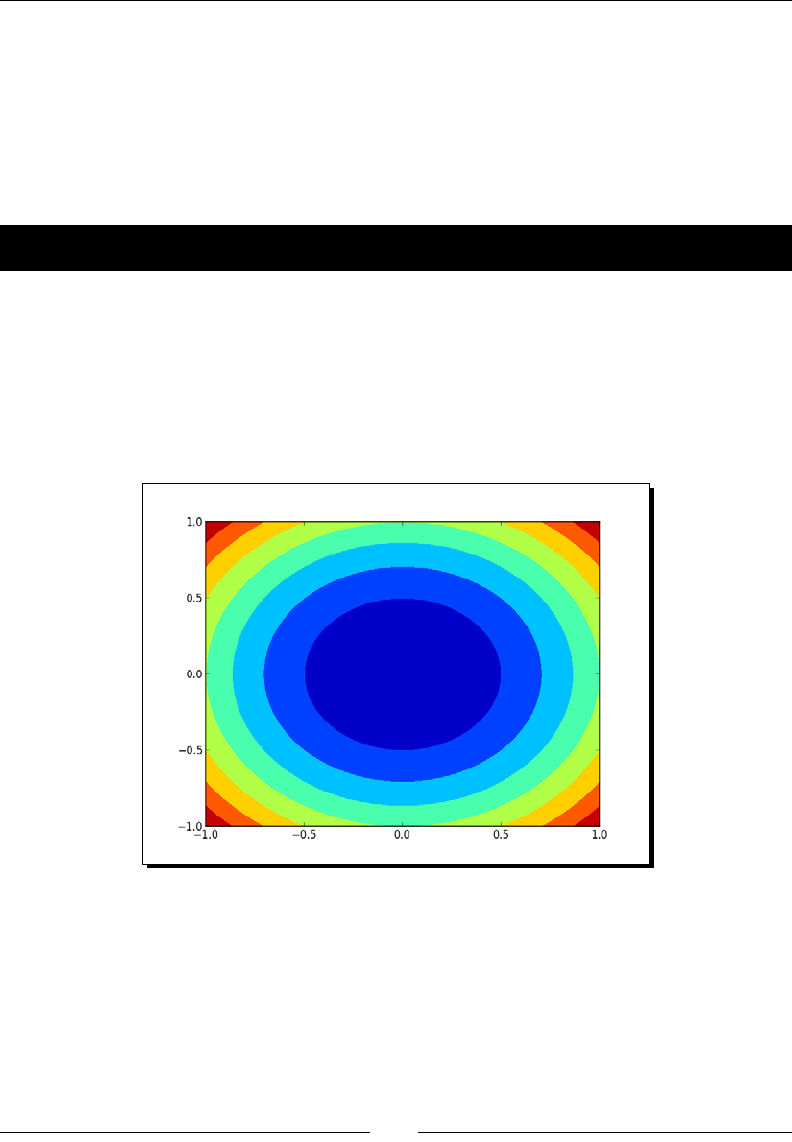

- Contour plots

- Time for action – drawing a filled contour plot

- Animation

- Time for action – animating plots

- Summary

- 10: When NumPy is Not Enough – SciPy and Beyond

- MATLAB and Octave

- Time for action – saving and loading a .mat file

- Statistics

- Time for action – analyzing random values

- Samples comparison and SciKits

- Time for action – comparing stock log returns

- Signal processing

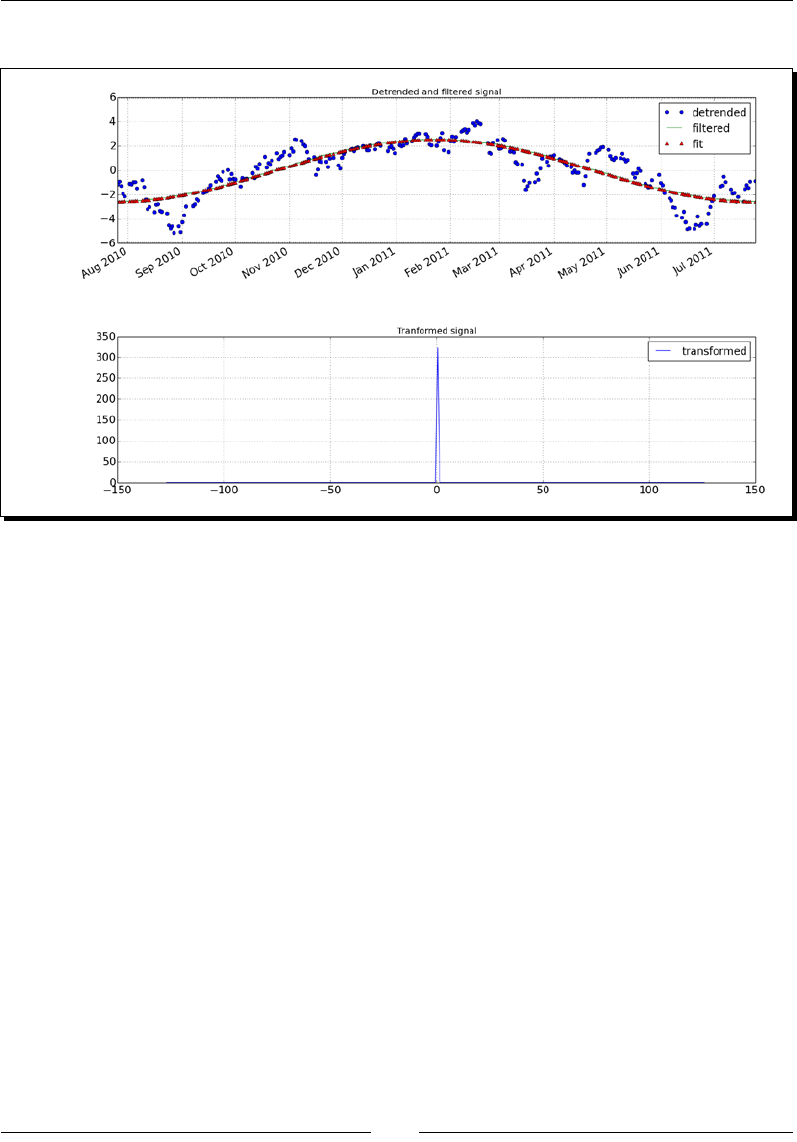

- Time for action – detecting a trend in QQQ

- Fourier analysis

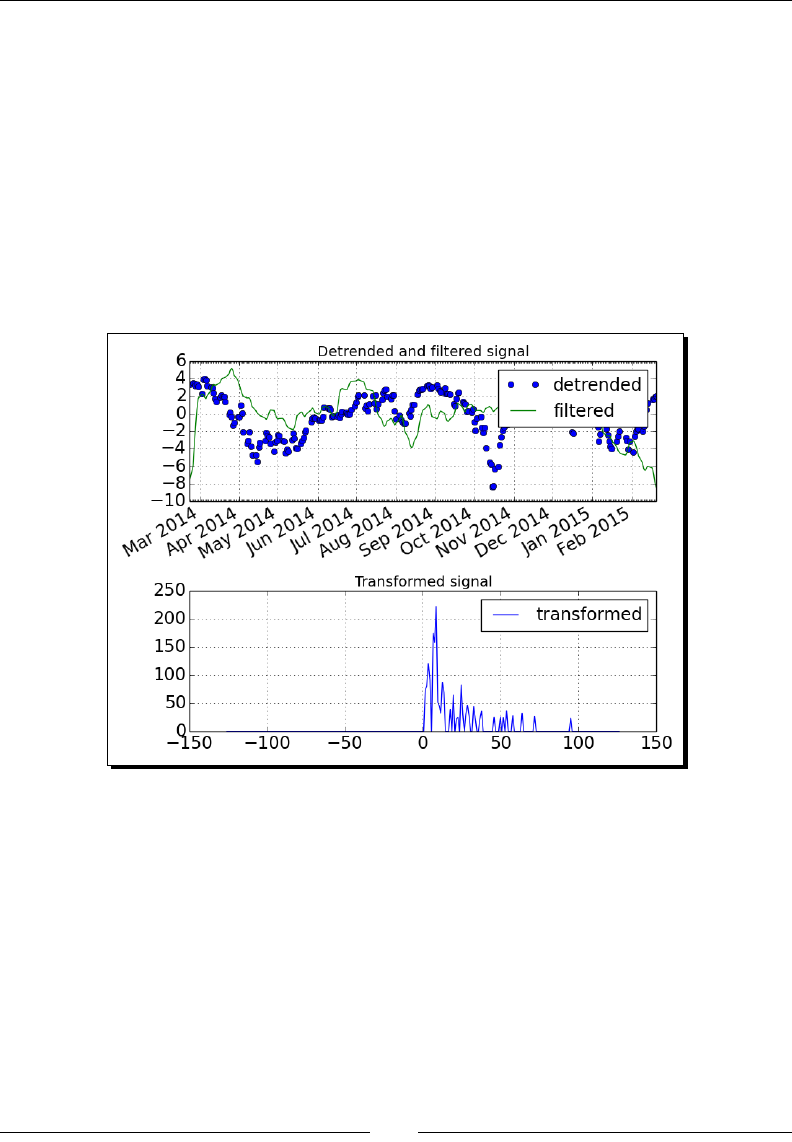

- Time for action – filtering a detrended signal

- Mathematical optimization

- Time for action – fitting to a sine

- Numerical integration

- Time for action – calculating the Gaussian integral

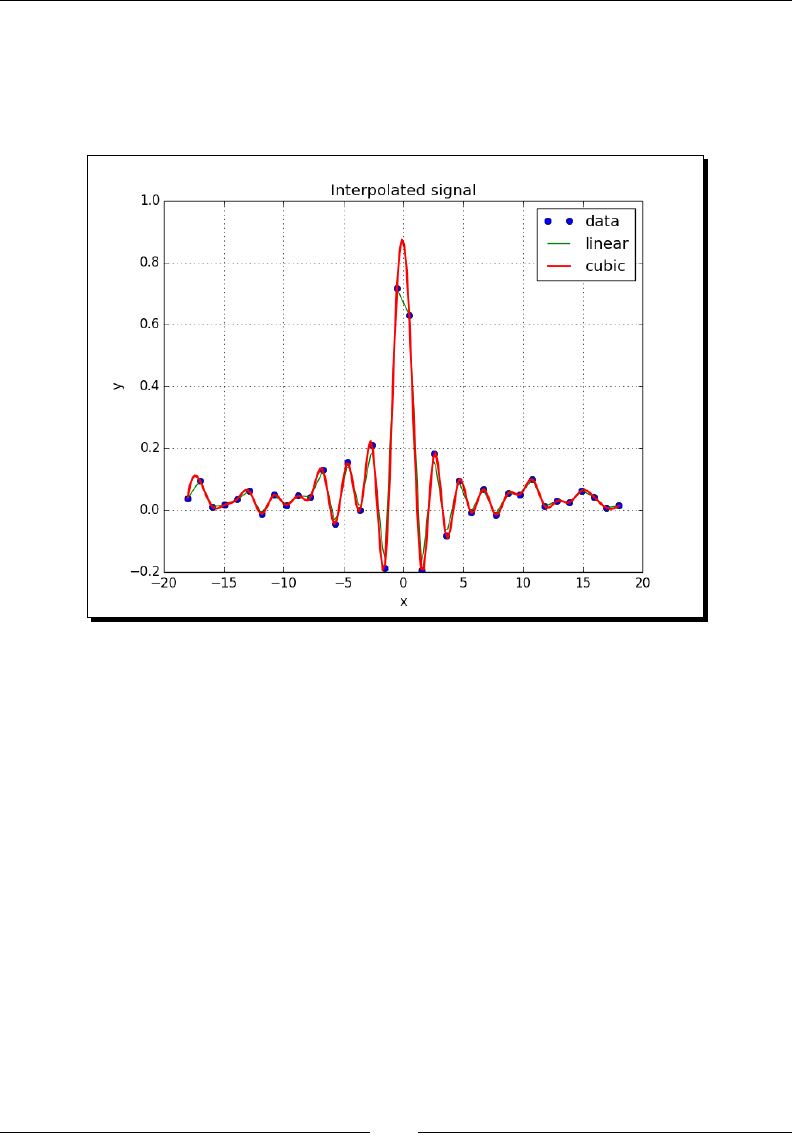

- Interpolation

- Time for action – interpolating in one dimension

- Image processing

- Time for action – manipulating Lena

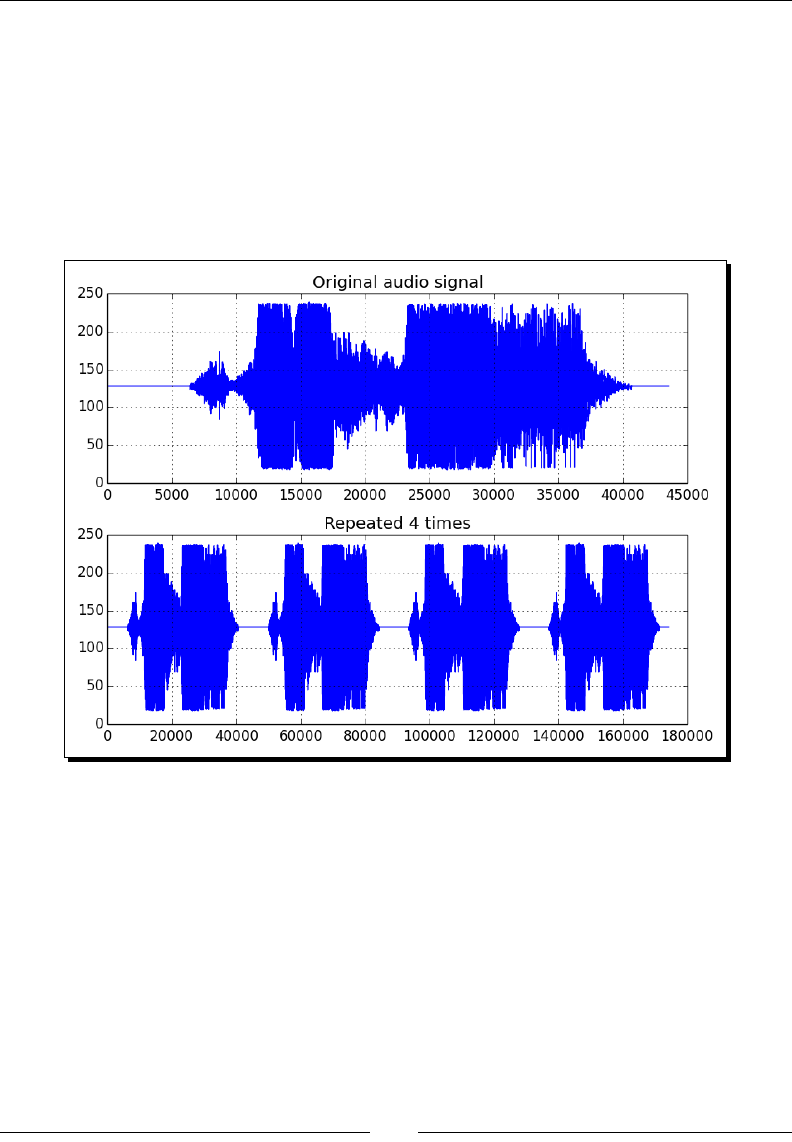

- Audio processing

- Time for action – replaying audio clips

- Summary

- 11: Playing with Pygame

- Pygame

- Time for action – installing Pygame

- Hello World

- Time for action – creating a simple game

- Animation

- Time for action – animating objects with NumPy and Pygame

- matplotlib

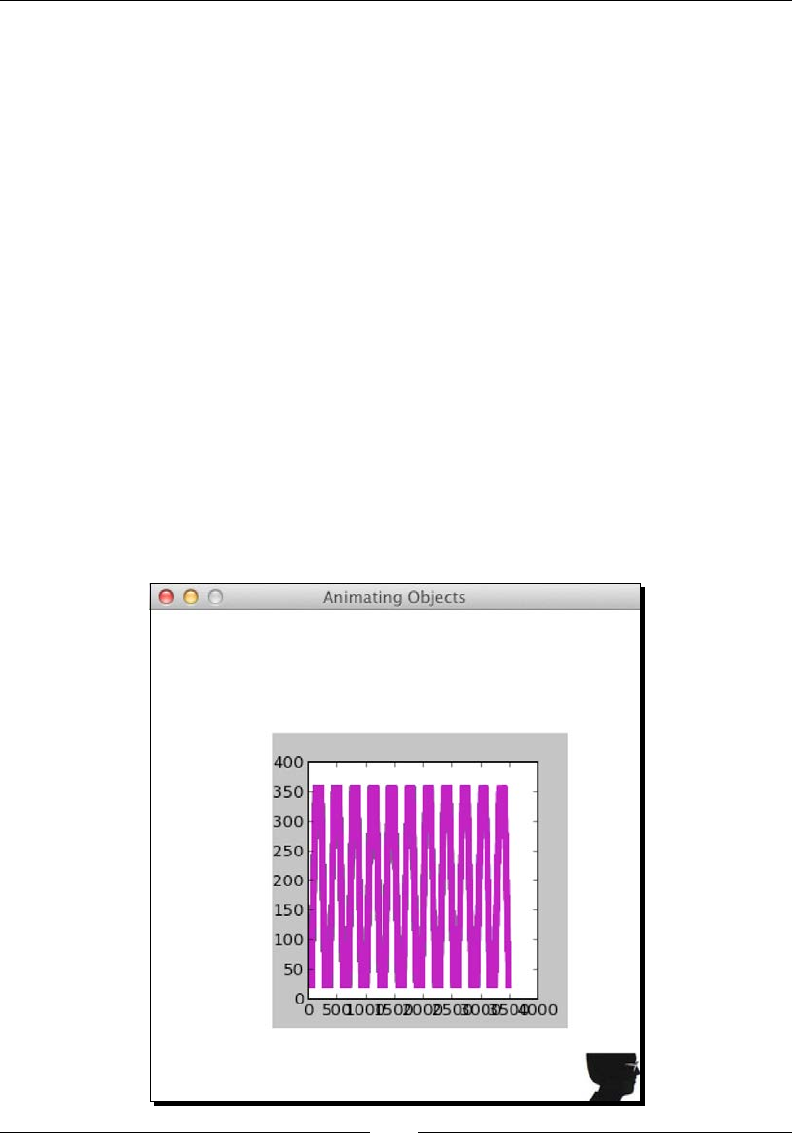

- Time for Action – using matplotlib in Pygame

- Surface pixels

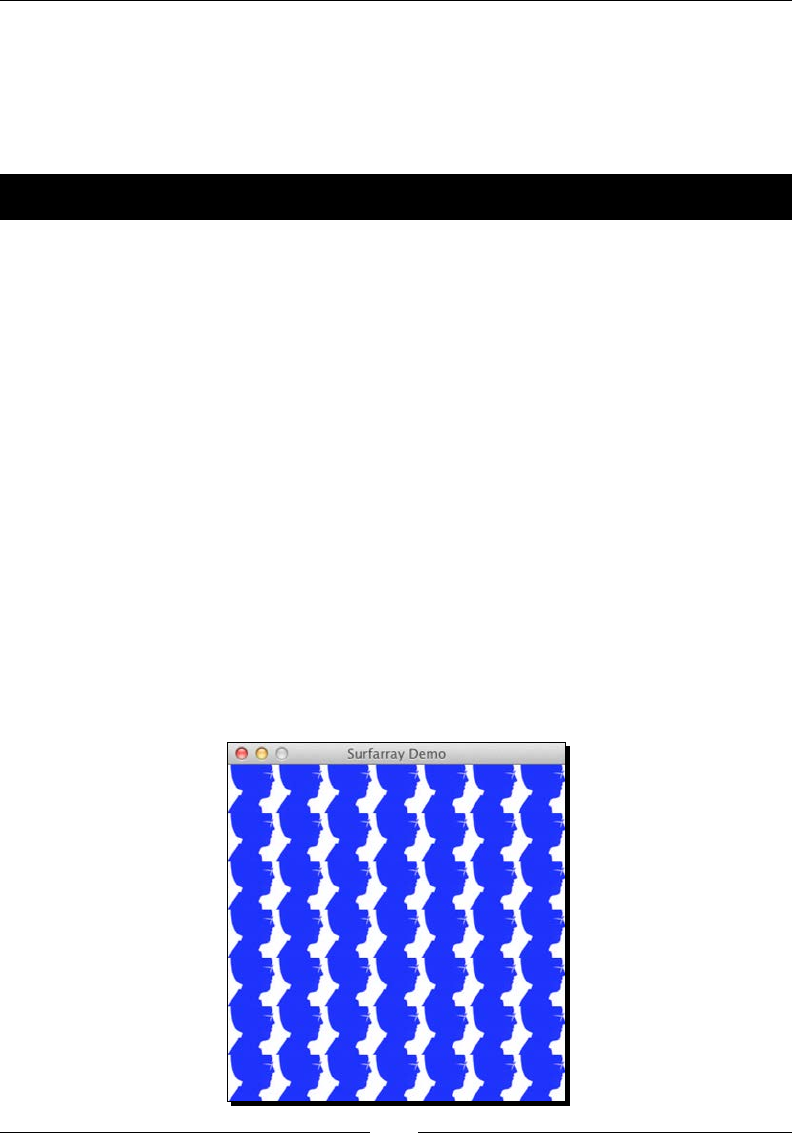

- Time for Action – accessing surface pixel data with NumPy

- Artificial Intelligence

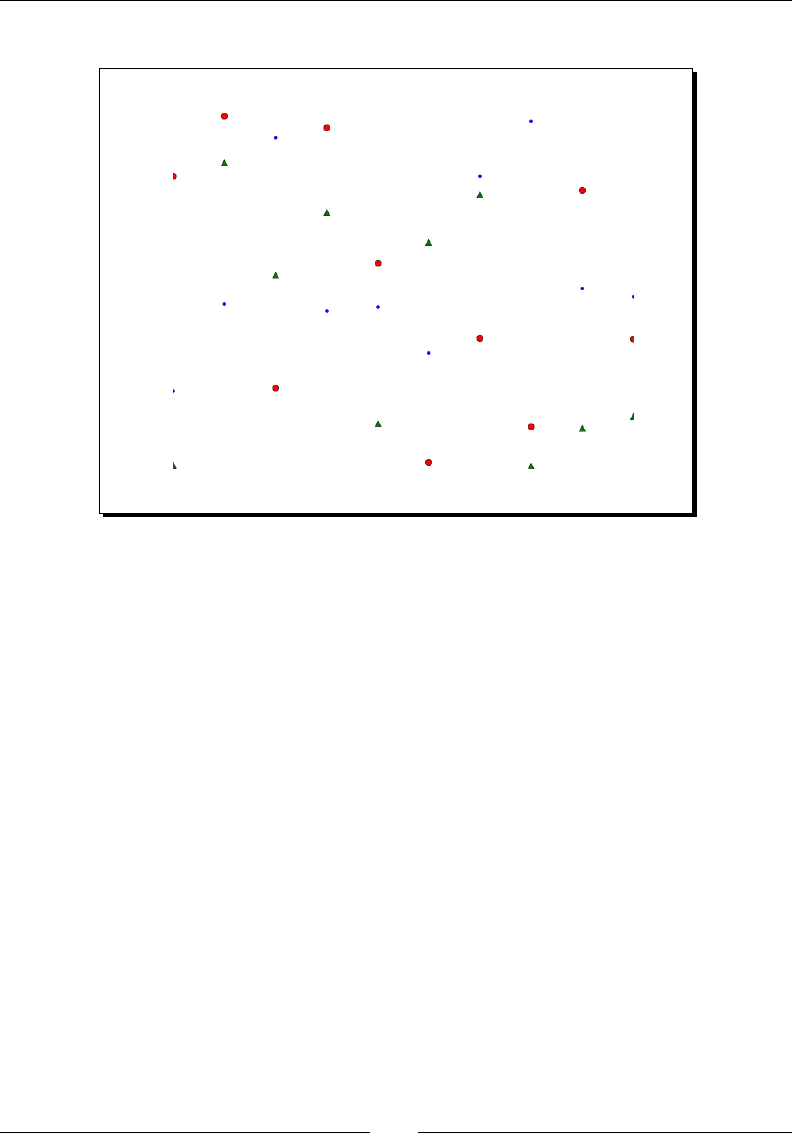

- Time for Action – clustering points

- OpenGL and Pygame

- Time for Action – drawing the Sierpinski gasket

- Simulation Game with Pygame

- Time for Action – simulating life

- Summary

- Appendix A: Pop Quiz Answers

- Appendix B: Additional Online Resources

- Appendix C: NumPy Functions' References

- Index

NumPy Beginner's Guide

Third Edition

Copyright © 2015 Packt Publishing

All rights reserved. No part of this book may be reproduced, stored in a retrieval system,

or transmied in any form or by any means, without the prior wrien permission of the

publisher, except in the case of brief quotaons embedded in crical arcles or reviews.

Every eort has been made in the preparaon of this book to ensure the accuracy of the

informaon presented. However, the informaon contained in this book is sold without

warranty, either express or implied. Neither the author, nor Packt Publishing, and its dealers

and distributors will be held liable for any damages caused or alleged to be caused directly

or indirectly by this book.

Packt Publishing has endeavored to provide trademark informaon about all of the

companies and products menoned in this book by the appropriate use of capitals.

However, Packt Publishing cannot guarantee the accuracy of this informaon.

First published: November 2011

Second edion: April 2013

Third edion: June 2015

Producon reference: 1160615

Published by Packt Publishing Ltd.

Livery Place

35 Livery Street

Birmingham B3 2PB, UK.

ISBN 978-1-78528-196-9

www.packtpub.com

Credits

Author

Ivan Idris

Reviewers

Alexandre Devert

Davide Fiacconi

Ardo Illaste

Commissioning Editor

Amarabha Banerjee

Acquision Editors

Shaon Basu

Usha Iyer

Rebecca Youe

Content Development Editor

Neeshma Ramakrishnan

Technical Editor

Rupali R. Shrawane

Copy Editors

Charloe Carneiro

Vikrant Phadke

Sameen Siddiqui

Project Coordinator

Shweta H. Birwatkar

Proofreader

Sas Eding

Indexer

Rekha Nair

Graphics

Sheetal Aute

Jason Monteiro

Producon Coordinator

Aparna Bhagat

Cover Work

Aparna Bhagat

About the Author

Ivan Idris has an MSc in experimental physics. His graduaon thesis had a strong emphasis

on applied computer science. Aer graduang, he worked for several companies as a Java

developer, data warehouse developer, and QA Analyst. His main professional interests are

business intelligence, big data, and cloud compung. Ivan enjoys wring clean, testable

code and interesng technical arcles. He is the author of NumPy Beginner's Guide, NumPy

Cookbook, Learning NumPy Array, and Python Data Analysis. You can nd more informaon

about him and a blog with a few examples of NumPy at http://ivanidris.net/

wordpress/.

I would like to take this opportunity to thank the reviewers and the team

at Packt Publishing for making this book possible. Also thanks go to my

teachers, professors, colleagues, Wikipedia contributors, Stack Overow

contributors, and other authors who taught me science and programming.

Last but not least, I would like to acknowledge my parents, family, and

friends for their support.

About the Reviewers

Davide Fiacconi is compleng his PhD in theorecal astrophysics from the Instute for

Computaonal Science at the University of Zurich. He did his undergraduate and graduate

studies at the University of Milan-Bicocca, studying the evoluon of collisional ring galaxies

using hydrodynamic numerical simulaons. Davide's research now focuses on the formaon

and coevoluon of supermassive black holes and galaxies, using both massively parallel

simulaons and analycal techniques. In parcular, his interests include the formaon of the

rst supermassive black hole seeds, the dynamics of binary black holes, and the evoluon of

high-redshi galaxies.

Ardo Illaste is a data scienst. He wants to provide everyone with easy access to data for

making major life and career decisions. He completed his PhD in computaonal biophysics,

prior to fully delving into data mining and machine learning. Ardo has worked and studied in

Estonia, the USA, and Switzerland.

www.PacktPub.com

Support les, eBooks, discount offers, and more

For support les and downloads related to your book, please visit www.PacktPub.com.

Did you know that Packt oers eBook versions of every book published, with PDF and ePub

les available? You can upgrade to the eBook version at www.PacktPub.com and as a print

book customer, you are entled to a discount on the eBook copy. Get in touch with us at

service@packtpub.com for more details.

At www.PacktPub.com, you can also read a collecon of free technical arcles, sign up

for a range of free newsleers and receive exclusive discounts and oers on Packt books

and eBooks.

TM

https://www2.packtpub.com/books/subscription/packtlib

Do you need instant soluons to your IT quesons? PacktLib is Packt's online digital book

library. Here, you can search, access, and read Packt's enre library of books.

Why subscribe?

Fully searchable across every book published by Packt

Copy and paste, print, and bookmark content

On demand and accessible via a web browser

Free access for Packt account holders

If you have an account with Packt at www.PacktPub.com, you can use this to access

PacktLib today and view 9 enrely free books. Simply use your login credenals for

immediate access.

[ i ]

Table of Contents

Preface ix

Chapter 1: NumPy Quick Start 1

Python 1

Time for acon – installing Python on dierent operang systems 2

The Python help system 3

Time for acon – using the Python help system 3

Basic arithmec and variable assignment 4

Time for acon – using Python as a calculator 4

Time for acon – assigning values to variables 5

The print() funcon 6

Time for acon – prinng with the print() funcon 6

Code comments 7

Time for acon – commenng code 7

The if statement 8

Time for acon – deciding with the if statement 8

The for loop 9

Time for acon – repeang instrucons with loops 9

Python funcons 11

Time for acon – dening funcons 11

Python modules 12

Time for acon – imporng modules 12

NumPy on Windows 13

Time for acon – installing NumPy, matplotlib, SciPy, and IPython on Windows 13

NumPy on Linux 15

Time for acon – installing NumPy, matplotlib, SciPy, and IPython on Linux 15

NumPy on Mac OS X 16

Time for acon – installing NumPy, SciPy, matplotlib, and IPython with

MacPorts or Fink 16

Table of Contents

[ ii ]

Building from source 16

Arrays 17

Time for acon – adding vectors 17

IPython – an interacve shell 21

Online resources and help 25

Summary 26

Chapter 2: Beginning with NumPy Fundamentals 27

NumPy array object 28

Time for acon – creang a muldimensional array 29

Selecng elements 30

NumPy numerical types 31

Data type objects 33

Character codes 33

The dtype constructors 34

The dtype aributes 35

Time for acon – creang a record data type 35

One-dimensional slicing and indexing 36

Time for acon – slicing and indexing muldimensional arrays 36

Time for acon – manipulang array shapes 39

Time for acon – stacking arrays 41

Time for acon – spling arrays 46

Time for acon – converng arrays 51

Summary 51

Chapter 3: Geng Familiar with Commonly Used Funcons 53

File I/O 53

Time for acon – reading and wring les 54

Comma-seperated value les 55

Time for acon – loading from CSV les 55

Volume Weighted Average Price 56

Time for acon – calculang Volume Weighted Average Price 56

The mean() funcon 56

Time-weighted average price 57

Value range 58

Time for acon – nding highest and lowest values 58

Stascs 59

Time for acon – performing simple stascs 59

Stock returns 62

Time for acon – analyzing stock returns 63

Dates 65

Table of Contents

[ iii ]

Time for acon – dealing with dates 65

Time for acon – using the dateme64 data type 69

Weekly summary 70

Time for acon – summarizing data 70

Average True Range 74

Time for acon – calculang Average True Range 75

Simple Moving Average 77

Time for acon – compung the Simple Moving Average 77

Exponenal Moving Average 80

Time for acon – calculang the Exponenal Moving Average 80

Bollinger Bands 82

Time for acon – enveloping with Bollinger Bands 83

Linear model 86

Time for acon – predicng price with a linear model 86

Trend lines 89

Time for acon – drawing trend lines 90

Methods of ndarray 94

Time for acon – clipping and compressing arrays 94

Factorial 95

Time for acon – calculang the factorial 95

Missing values and Jackknife resampling 96

Time for acon – handling NaNs with the nanmean(), nanvar(),

and nanstd() funcons 97

Summary 98

Chapter 4: Convenience Funcons for Your Convenience 99

Correlaon 100

Time for acon – trading correlated pairs 100

Polynomials 104

Time for acon – ng to polynomials 105

On-balance volume 108

Time for acon – balancing volume 109

Simulaon 111

Time for acon – avoiding loops with vectorize() 111

Smoothing 114

Time for acon – smoothing with the hanning() funcon 114

Inializaon 118

Time for acon – creang value inialized arrays with the full() and

full_like() funcons 119

Summary 120

Table of Contents

[ iv ]

Chapter 5: Working with Matrices and ufuncs 121

Matrices 122

Time for acon – creang matrices 122

Creang a matrix from other matrices 123

Time for acon – creang a matrix from other matrices 123

Universal funcons 125

Time for acon – creang universal funcons 125

Universal funcon methods 126

Time for acon – applying the ufunc methods to the add funcon 127

Arithmec funcons 129

Time for acon – dividing arrays 129

Modulo operaon 131

Time for acon – compung the modulo 131

Fibonacci numbers 132

Time for acon – compung Fibonacci numbers 133

Lissajous curves 134

Time for acon – drawing Lissajous curves 135

Square waves 136

Time for acon – drawing a square wave 137

Sawtooth and triangle waves 138

Time for acon – drawing sawtooth and triangle waves 139

Bitwise and comparison funcons 140

Time for acon – twiddling bits 141

Fancy indexing 143

Time for acon – fancy indexing in-place for ufuncs with the at() method 144

Summary 144

Chapter 6: Moving Further with NumPy Modules 145

Linear algebra 145

Time for acon – inverng matrices 146

Solving linear systems 148

Time for acon – solving a linear system 148

Finding eigenvalues and eigenvectors 149

Time for acon – determining eigenvalues and eigenvectors 150

Singular value decomposion 151

Time for acon – decomposing a matrix 152

Pseudo inverse 154

Time for acon – compung the pseudo inverse of a matrix 154

Determinants 155

Time for acon – calculang the determinant of a matrix 155

Fast Fourier transform 156

Table of Contents

[ v ]

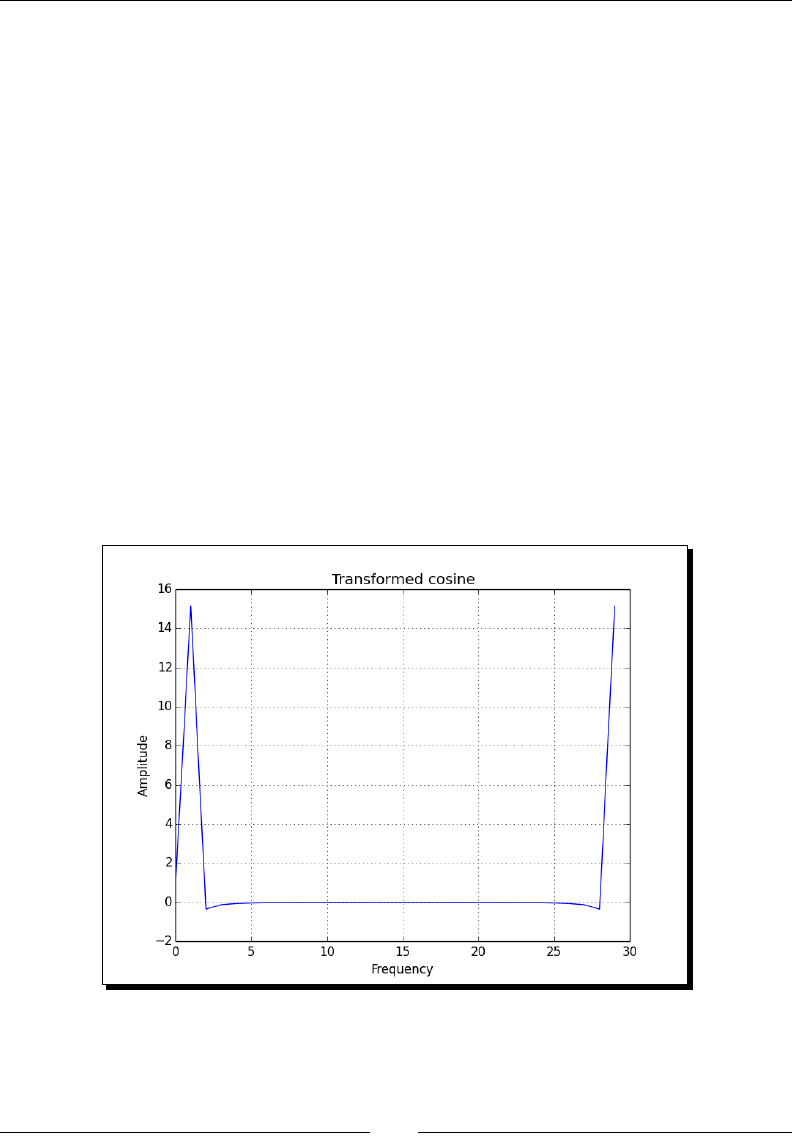

Time for acon – calculang the Fourier transform 156

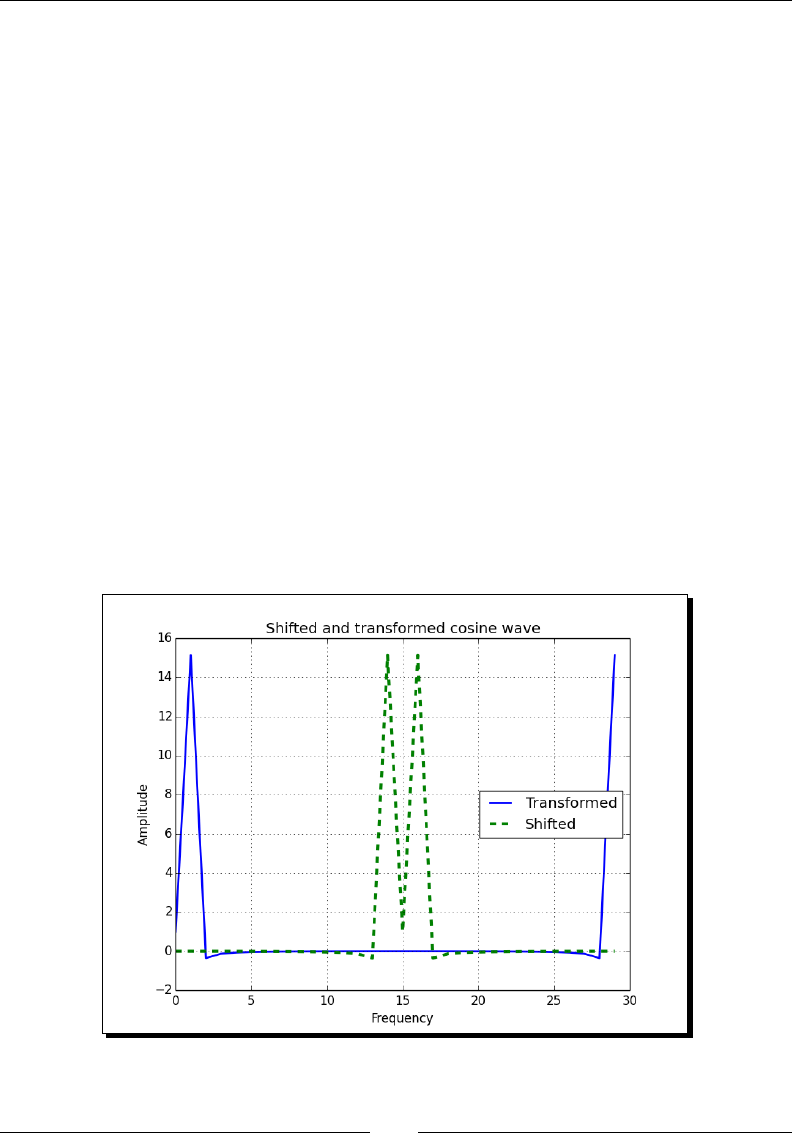

Shiing 158

Time for acon – shiing frequencies 158

Random numbers 160

Time for acon – gambling with the binomial 161

Hypergeometric distribuon 163

Time for acon – simulang a game show 163

Connuous distribuons 165

Time for acon – drawing a normal distribuon 165

Lognormal distribuon 167

Time for acon – drawing the lognormal distribuon 167

Bootstrapping in stascs 169

Time for acon – sampling with numpy.random.choice() 169

Summary 171

Chapter 7: Peeking into Special Rounes 173

Sorng 173

Time for acon – sorng lexically 174

Time for acon – paral sorng via selecon for a fast median

with the paron() funcon 175

Complex numbers 176

Time for acon – sorng complex numbers 177

Searching 178

Time for acon – using searchsorted 178

Array elements extracon 179

Time for acon – extracng elements from an array 179

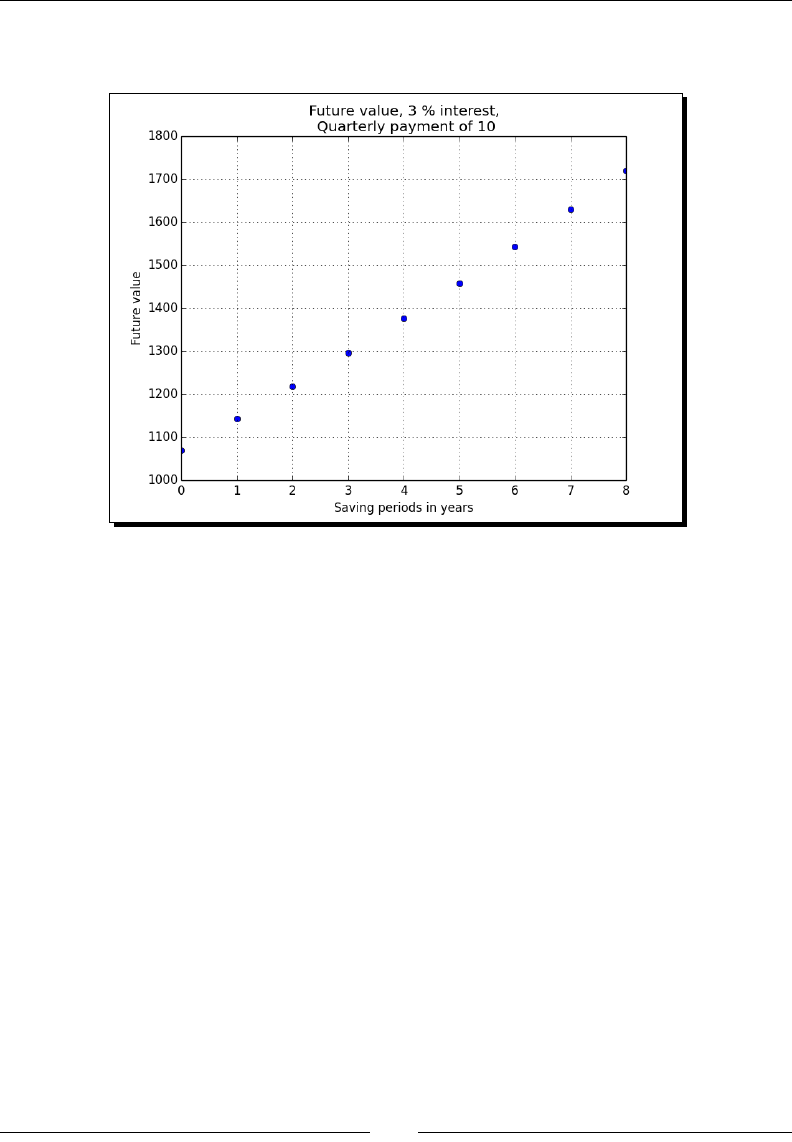

Financial funcons 180

Time for acon – determining the future value 181

Present value 183

Time for acon – geng the present value 183

Net present value 183

Time for acon – calculang the net present value 184

Internal rate of return 184

Time for acon – determining the internal rate of return 185

Periodic payments 185

Time for acon – calculang the periodic payments 185

Number of payments 186

Time for acon – determining the number of periodic payments 186

Interest rate 186

Time for acon – guring out the rate 186

Window funcons 187

Table of Contents

[ vi ]

Time for acon – plong the Bartle window 187

Blackman window 188

Time for acon – smoothing stock prices with the Blackman window 189

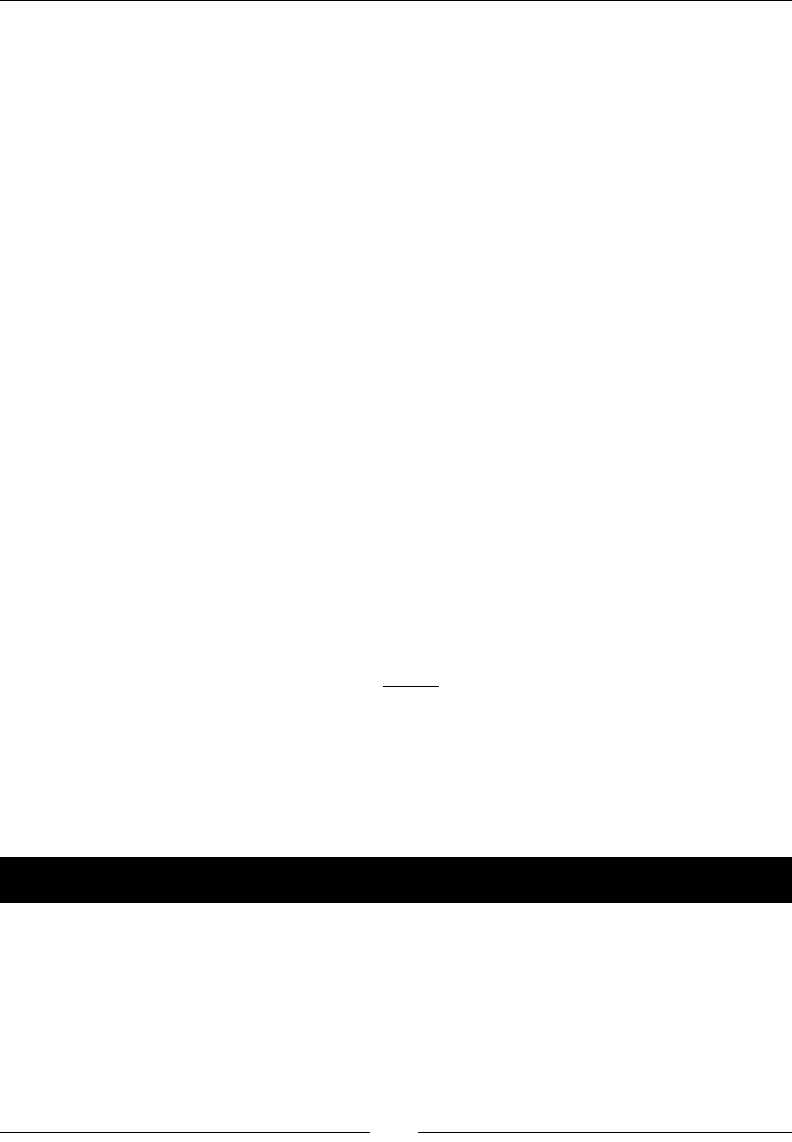

Hamming window 190

Time for acon – plong the Hamming window 190

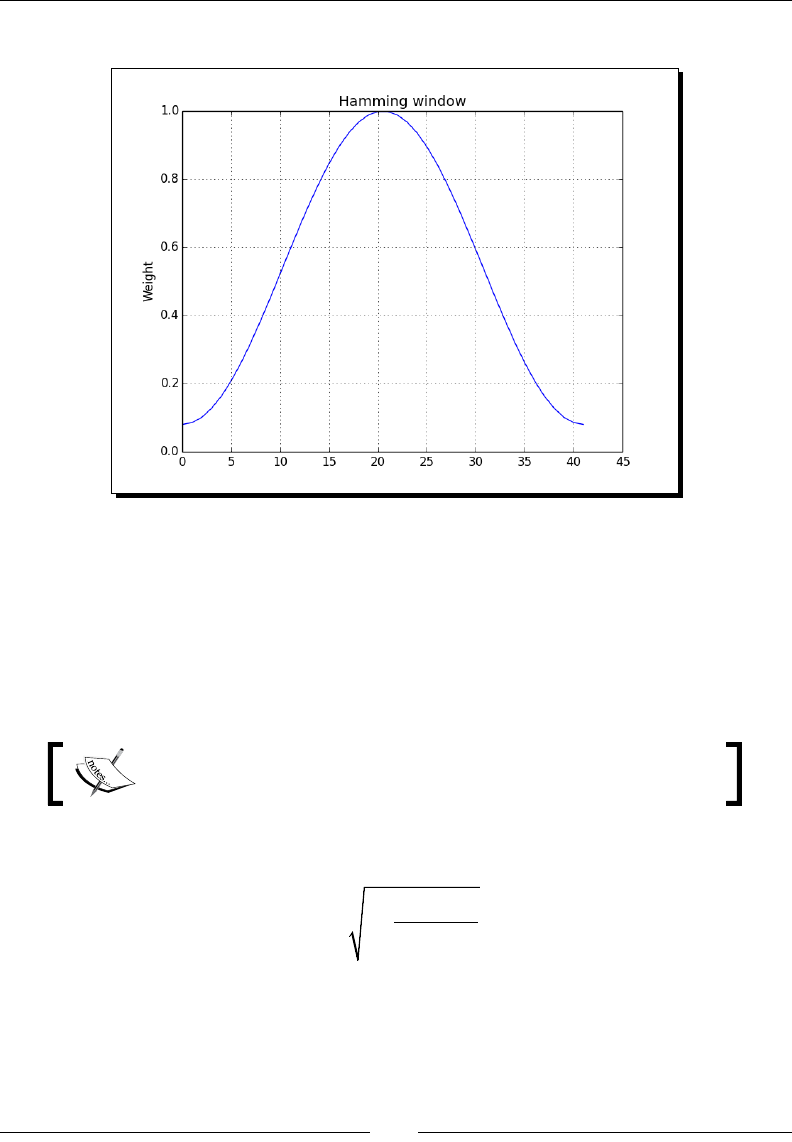

Kaiser window 191

Time for acon – plong the Kaiser window 192

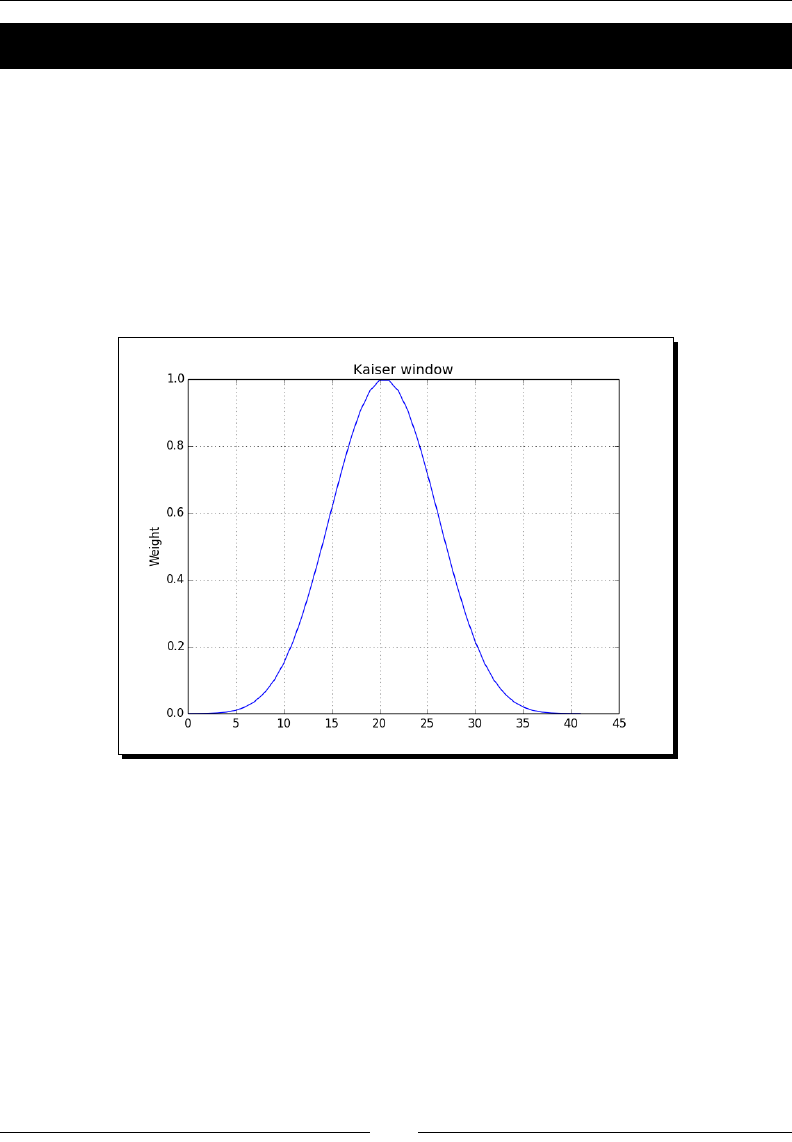

Special mathemacal funcons 192

Time for acon – plong the modied Bessel funcon 193

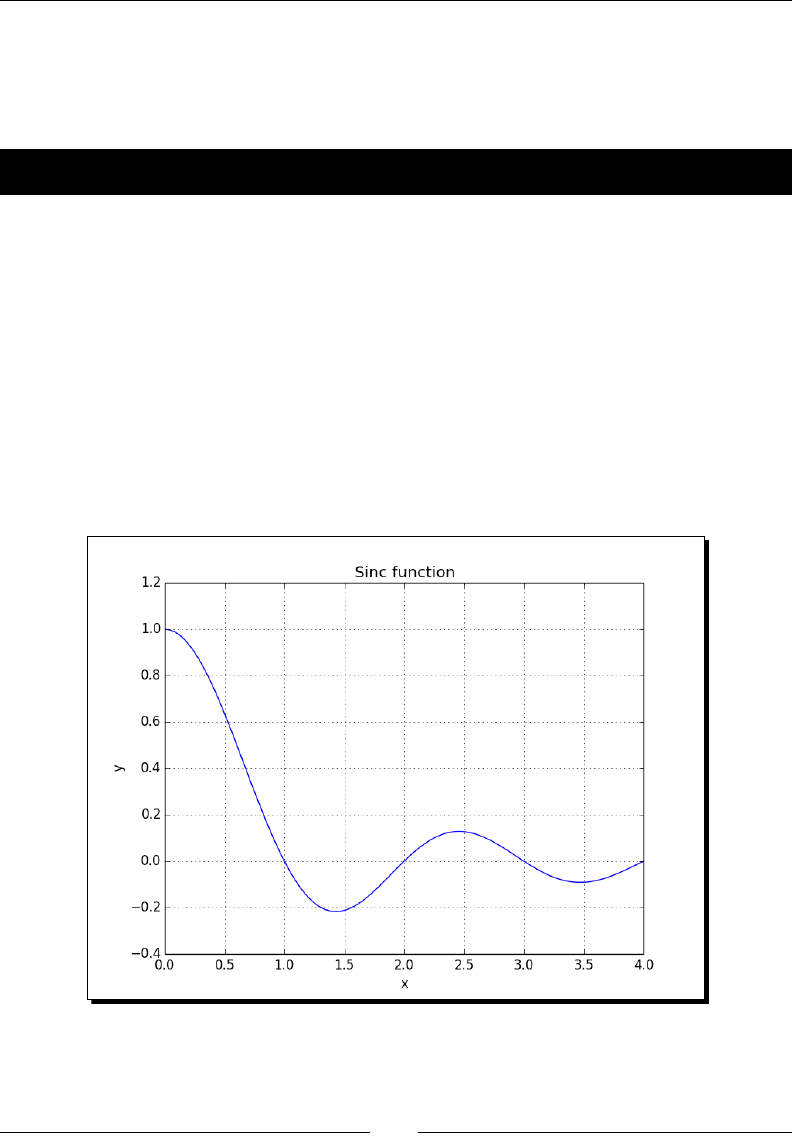

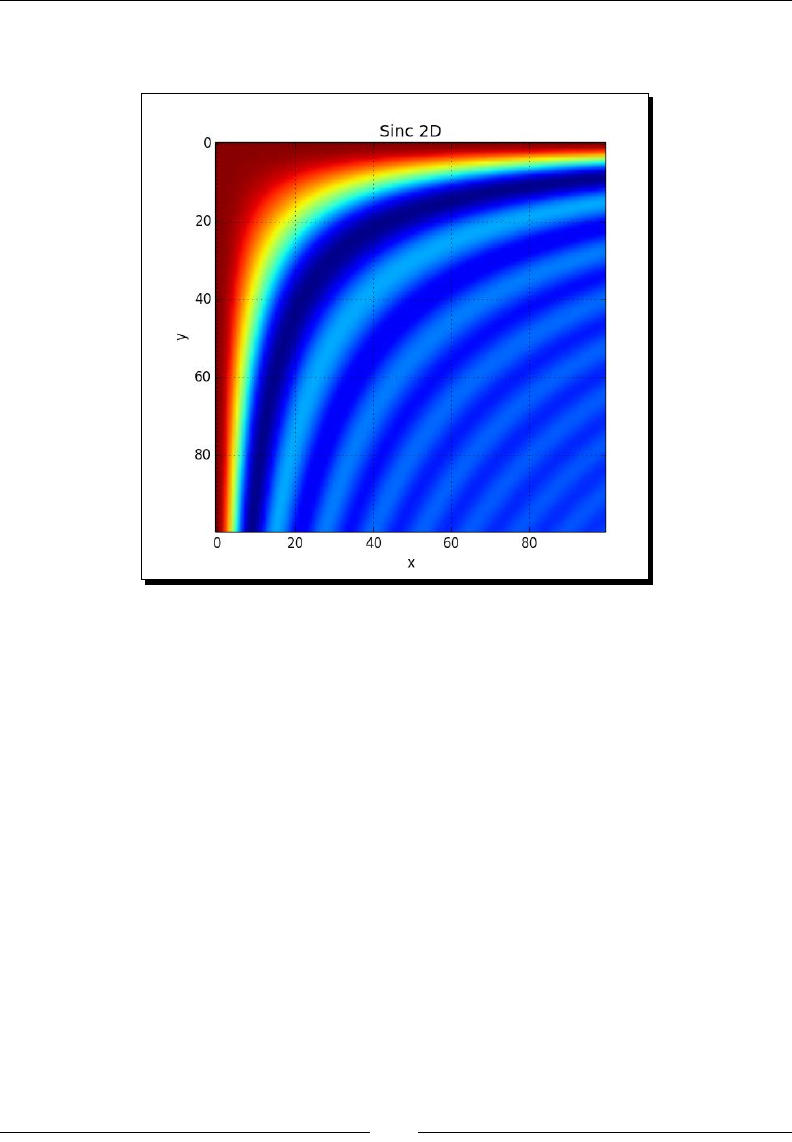

sinc 194

Time for acon – plong the sinc funcon 194

Summary 196

Chapter 8: Assuring Quality with Tesng 197

Assert funcons 198

Time for acon – asserng almost equal 198

Approximately equal arrays 199

Time for acon – asserng approximately equal 200

Almost equal arrays 200

Time for acon – asserng arrays almost equal 201

Equal arrays 202

Time for acon – comparing arrays 202

Ordering arrays 203

Time for acon – checking the array order 203

Object comparison 204

Time for acon – comparing objects 204

String comparison 204

Time for acon – comparing strings 205

Floang-point comparisons 205

Time for acon – comparing with assert_array_almost_equal_nulp 206

Comparison of oats with more ULPs 207

Time for acon – comparing using maxulp of 2 207

Unit tests 207

Time for acon – wring a unit test 208

Nose test decorators 210

Time for acon – decorang tests 211

Docstrings 213

Time for acon – execung doctests 214

Summary 215

Table of Contents

[ vii ]

Chapter 9: Plong with matplotlib 217

Simple plots 217

Time for acon – plong a polynomial funcon 218

Plot format string 219

Time for acon – plong a polynomial and its derivaves 219

Subplots 221

Time for acon – plong a polynomial and its derivaves 221

Finance 223

Time for acon – plong a year's worth of stock quotes 223

Histograms 226

Time for acon – charng stock price distribuons 226

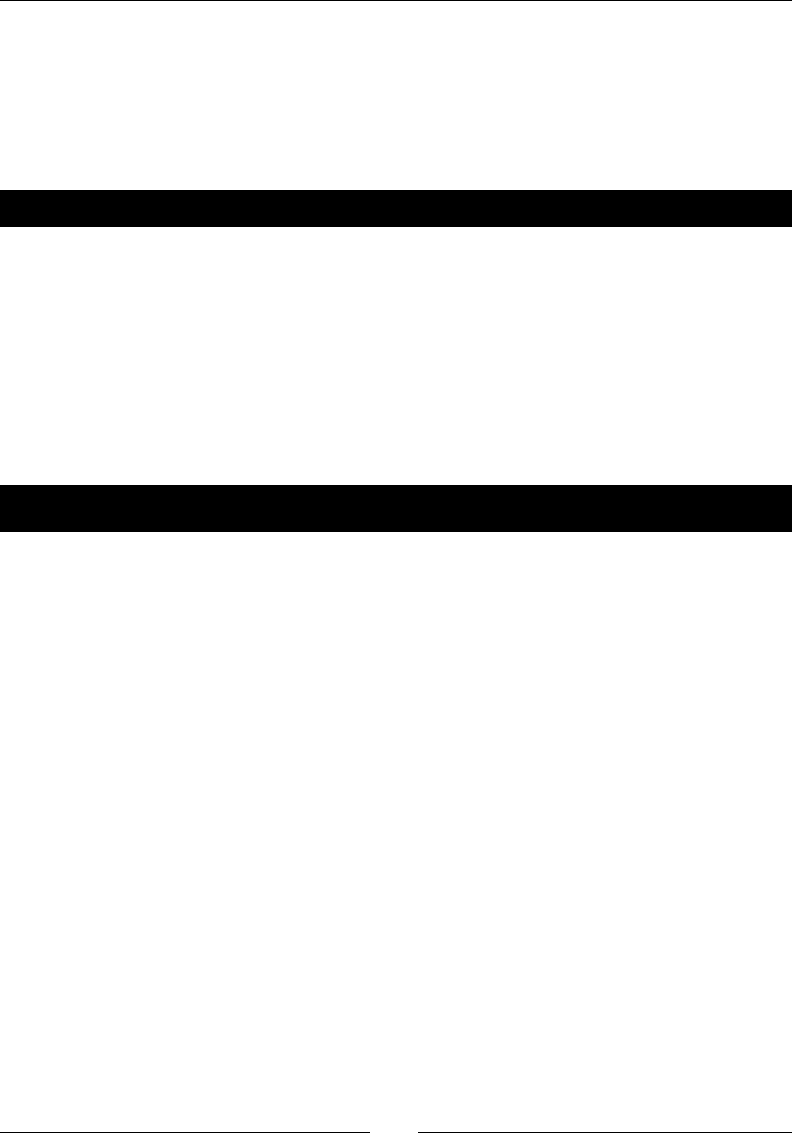

Logarithmic plots 228

Time for acon – plong stock volume 228

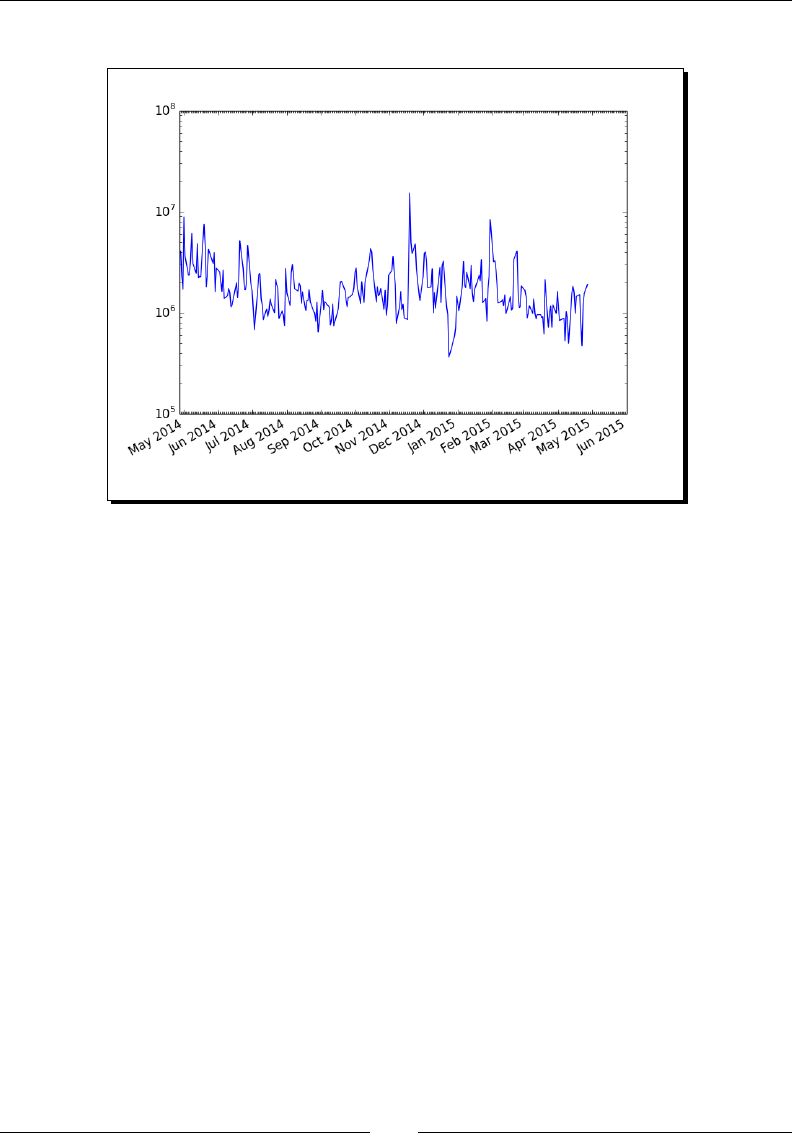

Scaer plots 230

Time for acon – plong price and volume returns with a scaer plot 230

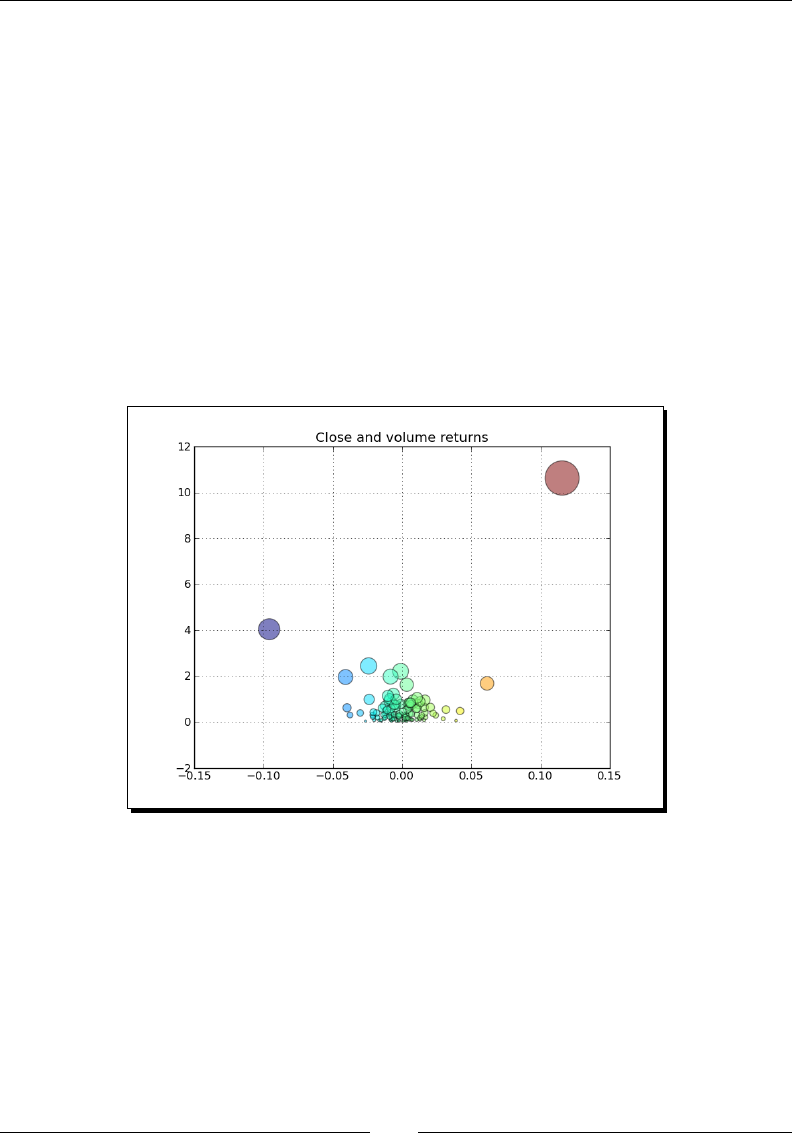

Fill between 232

Time for acon – shading plot regions based on a condion 232

Legend and annotaons 234

Time for acon – using a legend and annotaons 235

Three-dimensional plots 238

Time for acon – plong in three dimensions 238

Contour plots 240

Time for acon – drawing a lled contour plot 240

Animaon 241

Time for acon – animang plots 241

Summary 243

Chapter 10: When NumPy Is Not Enough – SciPy and Beyond 245

MATLAB and Octave 245

Time for acon – saving and loading a .mat le 246

Stascs 247

Time for acon – analyzing random values 247

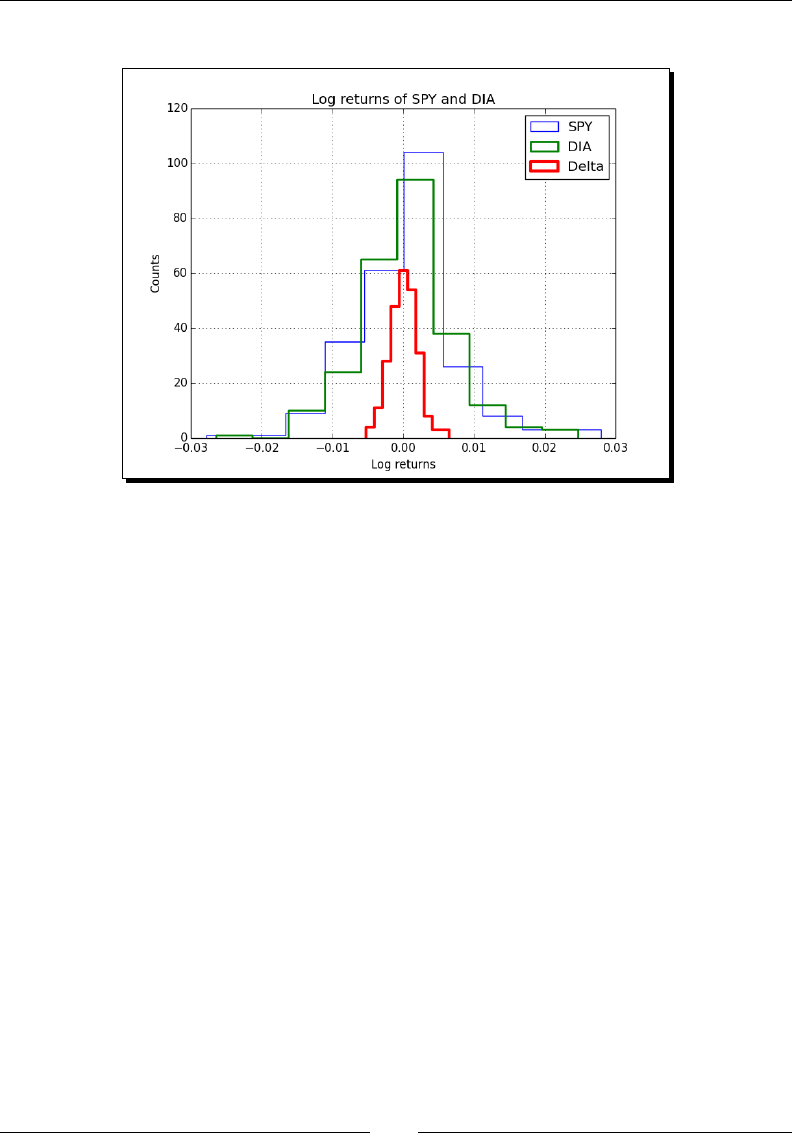

Sample comparison and SciKits 250

Time for acon – comparing stock log returns 250

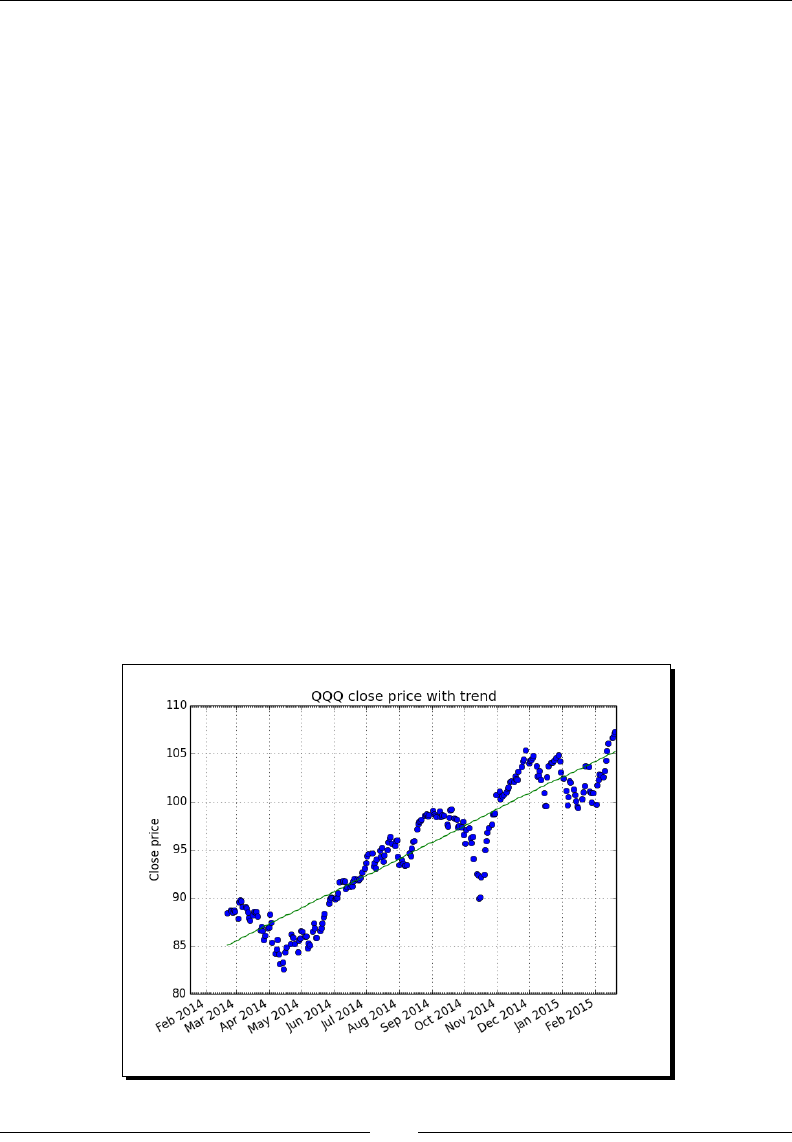

Signal processing 253

Time for acon – detecng a trend in QQQ 253

Fourier analysis 256

Time for acon – ltering a detrended signal 256

Mathemacal opmizaon 259

Time for acon – ng to a sine 259

Numerical integraon 263

Table of Contents

[ viii ]

Time for acon – calculang the Gaussian integral 263

Interpolaon 264

Time for acon – interpolang in one dimension 264

Image processing 266

Time for acon – manipulang Lena 266

Audio processing 268

Time for acon – replaying audio clips 268

Summary 270

Chapter 11: Playing with Pygame 271

Pygame 271

Time for acon – installing Pygame 272

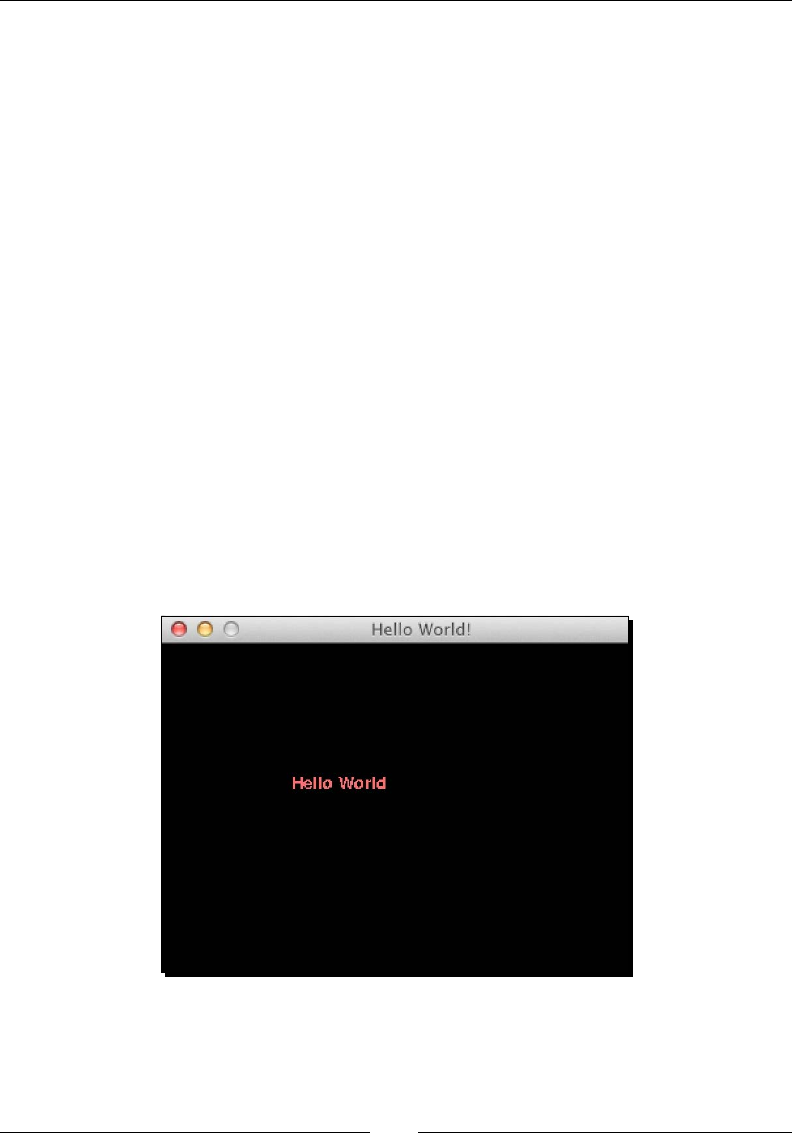

Hello World 272

Time for acon – creang a simple game 272

Animaon 275

Time for acon – animang objects with NumPy and Pygame 275

matplotlib 278

Time for Acon – using matplotlib in Pygame 278

Surface pixels 282

Time for Acon – accessing surface pixel data with NumPy 282

Arcial Intelligence 284

Time for Acon – clustering points 284

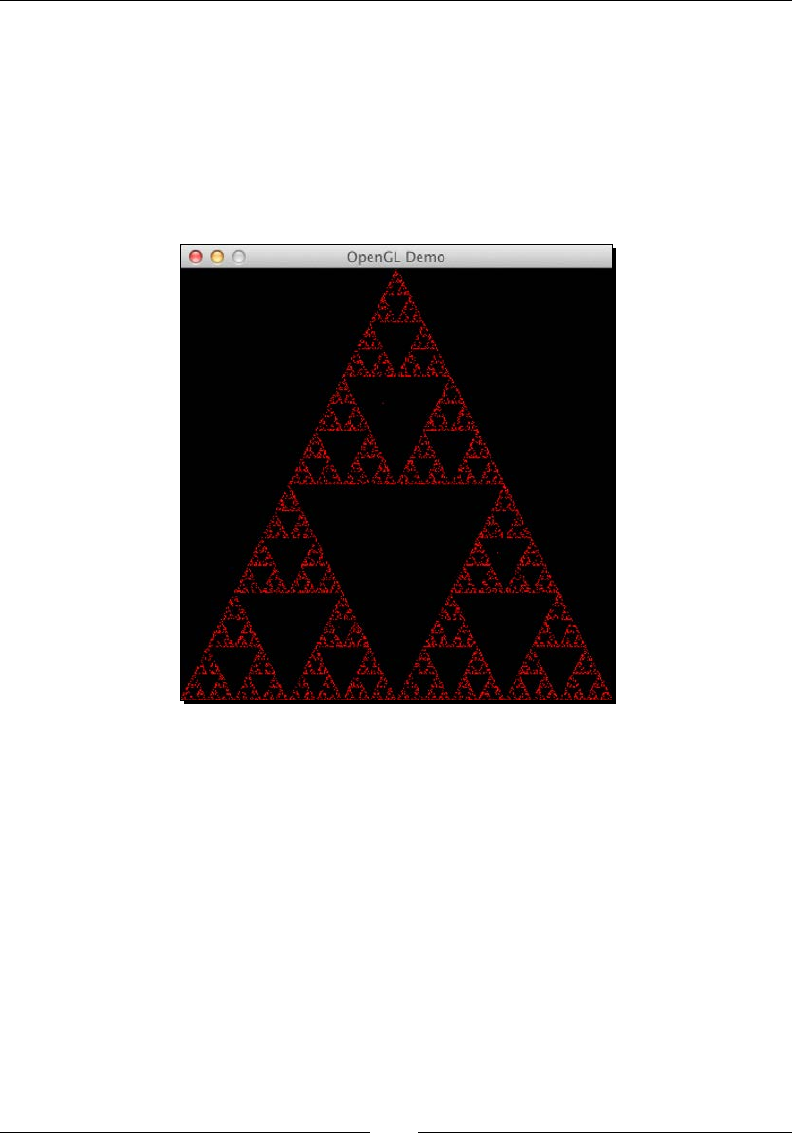

OpenGL and Pygame 287

Time for Acon – drawing the Sierpinski gasket 287

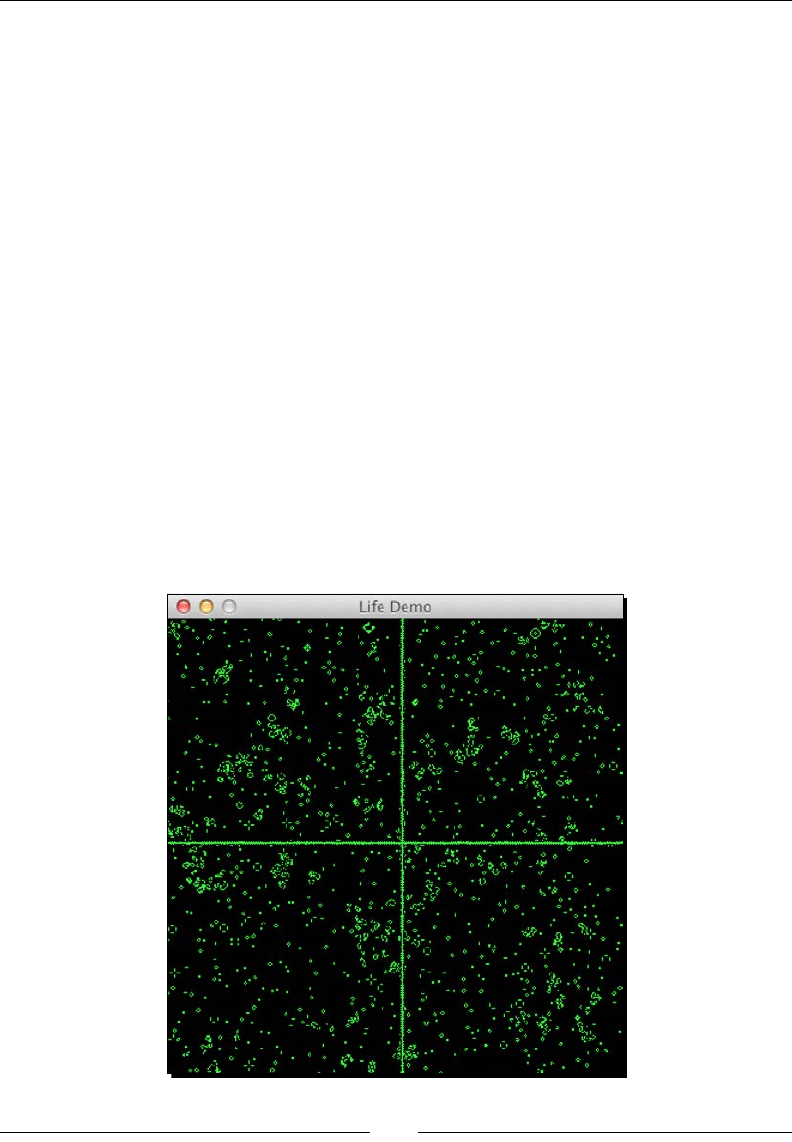

Simulaon game with Pygame 290

Time for Acon – simulang life 290

Summary 294

Appendix A: Pop Quiz Answers 295

Appendix B: Addional Online Resources 299

Python 299

Mathemacs and stascs 300

Appendix C: NumPy Funcons' References 301

Index 307

Preface

Sciensts, engineers, and quantave data analysts face many challenges nowadays. Data

sciensts want to be able to perform numerical analysis on large datasets with minimal

programming eort. They also want to write readable, ecient, and fast code that is as close

as possible to the mathemacal language they are used to. A number of accepted soluons

are available in the scienc compung world.

The C, C++, and Fortran programming languages have their benets, but they are not

interacve and considered too complex by many. The common commercial alternaves,

such as MATLAB, Maple, and Mathemaca, provide powerful scripng languages that are

even more limited than any general-purpose programming language. Other open source

tools similar to MATLAB exist, such as R, GNU Octave, and Scilab. Obviously, they too lack

the power of a language such as Python.

Python is a popular general-purpose programming language that is widely used in the

scienc community. You can access legacy C, Fortran, or R code easily from Python. It

is object-oriented and considered to be of a higher level than C or Fortran. It allows you

to write readable and clean code with minimal fuss. However, it lacks an out-of-the-box

MATLAB equivalent. That's where NumPy comes in. This book is about NumPy and related

Python libraries, such as SciPy and matplotlib.

What is NumPy?

NumPy (short for numerical Python) is an open source Python library for scienc

compung. It lets you work with arrays and matrices in a natural way. The library contains

a long list of useful mathemacal funcons, including some funcons for linear algebra,

Fourier transformaon, and random number generaon rounes. LAPACK, a linear algebra

library, is used by the NumPy linear algebra module if you have it installed on your system.

Otherwise, NumPy provides its own implementaon. LAPACK is a well-known library,

originally wrien in Fortran, on which MATLAB relies as well. In a way, NumPy replaces some

of the funconality of MATLAB and Mathemaca, allowing rapid interacve prototyping.

Preface

[ x ]

We will not be discussing NumPy from a developing contributor's perspecve, but from more

of a user's perspecve. NumPy is a very acve project and has a lot of contributors. Maybe,

one day you will be one of them!

History

NumPy is based on its predecessor Numeric. Numeric was rst released in 1995 and has

deprecated status now. Neither Numeric nor NumPy made it into the standard Python library

for various reasons. However, you can install NumPy separately, which will be explained in

Chapter 1, NumPy Quick Start.

In 2001, a number of people inspired by Numeric created SciPy, an open source scienc

compung Python library that provides funconality similar to that of MATLAB, Maple, and

Mathemaca. Around this me, people were growing increasingly unhappy with Numeric.

Numarray was created as an alternave to Numeric. That is also deprecated now. It was

beer in some areas than Numeric, but worked very dierently. For that reason, SciPy kept

on depending on the Numeric philosophy and the Numeric array object. As is customary

with new latest and greatest soware, the arrival of Numarray led to the development of

an enre ecosystem around it, with a range of useful tools.

In 2005, Travis Oliphant, an early contributor to SciPy, decided to do something about this

situaon. He tried to integrate some of Numarray's features into Numeric. A complete

rewrite took place, and it culminated in the release of NumPy 1.0 in 2006. At that me,

NumPy had all the features of Numeric and Numarray, and more. Tools were available to

facilitate the upgrade from Numeric and Numarray. The upgrade is recommended since

Numeric and Numarray are not acvely supported any more.

Originally, the NumPy code was a part of SciPy. It was later separated and is now used by

SciPy for array and matrix processing.

Why use NumPy?

NumPy code is much cleaner than straight Python code and it tries to accomplish the

same tasks. There are fewer loops required because operaons work directly on arrays

and matrices. The many convenience and mathemacal funcons make life easier as well.

The underlying algorithms have stood the test of me and have been designed with high

performance in mind.

NumPy's arrays are stored more eciently than an equivalent data structure in base Python,

such as a list of lists. Array IO is signicantly faster too. The improvement in performance

scales with the number of elements of the array. For large arrays, it really pays o to use

NumPy. Files as large as several terabytes can be memory-mapped to arrays, leading to

opmal reading and wring of data.

Preface

[ xi ]

The drawback of NumPy arrays is that they are more specialized than plain lists. Outside the

context of numerical computaons, NumPy arrays are less useful. The technical details of

NumPy arrays will be discussed in later chapters.

Large porons of NumPy are wrien in C. This makes NumPy faster than pure Python code.

A NumPy C API exists as well, and it allows further extension of funconality with the help

of the C language. The C API falls outside the scope of the book. Finally, since NumPy is open

source, you get all the related advantages. The price is the lowest possible—as free as a

beer. You don't have to worry about licenses every me somebody joins your team or you

need an upgrade of the soware. The source code is available for everyone. This of course is

benecial to code quality.

Limitations of NumPy

If you are a Java programmer, you might be interested in Jython, the Java implementaon of

Python. In that case, I have bad news for you. Unfortunately, Jython runs on the Java Virtual

Machine and cannot access NumPy because NumPy's modules are mostly wrien in C. You

could say that Jython and Python are two totally dierent worlds, though they do implement

the same specicaons. There are some workarounds for this discussed in NumPy Cookbook

- Second Edion, Packt Publishing, wrien by Ivan Idris.

What this book covers

Chapter 1, NumPy Quick Start, guides you through the steps needed to install NumPy on

your system and create a basic NumPy applicaon.

Chapter 2, Beginning with NumPy Fundamentals, introduces NumPy arrays and

fundamentals.

Chapter 3, Geng Familiar with Commonly Used Funcons, teaches you the most commonly

used NumPy funcons—the basic mathemacal and stascal funcons.

Chapter 4, Convenience Funcons for Your Convenience, tells you about funcons that

make working with NumPy easier. This includes funcons that select certain parts of your

arrays, for instance, based on a Boolean condion. You also learn about polynomials and

manipulang the shapes of NumPy objects.

Chapter 5, Working with Matrices and ufuncs, covers matrices and universal funcons.

Matrices are well-known in mathemacs and have their representaon in NumPy as well.

Universal funcons (ufuncs) work on arrays element by element, or on scalars. ufuncs expect

a set of scalars as the input and produce a set of scalars as the output.

Preface

[ xii ]

Chapter 6, Moving Further with NumPy Modules, discusses a number of basic modules

of universal funcons. These funcons can typically be mapped to their mathemacal

counterparts, such as addion, subtracon, division, and mulplicaon.

Chapter 7, Peeking into Special Rounes, describes some of the more specialized NumPy

funcons. As NumPy users, we somemes nd ourselves having special requirements.

Fortunately, NumPy sases most of our needs.

Chapter 8, Assuring Quality with Tesng, teaches you how to write NumPy unit tests.

Chapter 9, Plong with matplotlib, covers matplotlib in depth, a very useful Python plong

library. NumPy cannot be used on its own to create graphs and plots. matplotlib integrates

nicely with NumPy and has plong capabilies comparable to MATLAB.

Chapter 10, When NumPy Is Not Enough – SciPy and Beyond, covers more details about

SciPy. We know that SciPy and NumPy are historically related. SciPy, as menoned in the

History secon, is a high-level Python scienc compung framework built on top of NumPy.

It can be used in conjuncon with NumPy.

Chapter 11, Playing with Pygame, is the dessert of this book. You learn how to create fun

games with NumPy and Pygame. You also get a taste of arcial intelligence in this chapter.

Appendix A, Pop Quiz Answers, has the answers to all the pop quiz quesons within

the chapters.

Appendix B, Addional Online Resources, contains links to Python, mathemacs, and

stascs websites.

Appendix C, NumPy Funcons' References, lists some useful NumPy funcons and

their descripons.

What you need for this book

To try out the code samples in this book, you will need a recent build of NumPy. This means

that you will need one of the Python versions supported by NumPy as well. Some code

samples make use of matplotlib for illustraon purposes. matplotlib is not strictly required

to follow the examples, but it is recommended that you install it too. The last chapter is

about SciPy and has one example involving SciKits.

Here is a list of the soware used to develop and test the code examples:

Python 2.7

NumPy 1.9

SciPy 0.13

Preface

[ xiii ]

matplotlib 1.3.1

Pygame 1.9.1

IPython 2.4.1

Needless to say, you don't need exactly this soware and these versions on your computer.

Python and NumPy constute the absolute minimum you will need.

Who this book is for

This book is for the sciensts, engineers, programmers, or analysts looking for a high-quality,

open source mathemacal library. Knowledge of Python is assumed. Also, some anity, or

at least interest, in mathemacs and stascs is required. However, I have provided brief

explanaons and pointers to learning resources.

Sections

In this book, you will nd several headings that appear frequently (Time for acon, What just

happened?, Have a go hero, and Pop quiz).

To give clear instrucons on how to complete a procedure or task, we use the following

secons.

Time for action – heading

1. Acon 1

2. Acon 2

3. Acon 3

Instrucons oen need some extra explanaon to ensure that they make sense, so they are

followed by these secons.

What just happened?

This secon explains the working of the tasks or instrucons that you have just completed.

You will also nd some other learning aids in the book.

Preface

[ xiv ]

Pop quiz – heading

These are short mulple-choice quesons intended to help you test your own understanding.

Have a go hero – heading

These are praccal challenges that give you ideas to experiment with what you have learned.

Conventions

In this book, you will nd a number of styles of text that disnguish between dierent

kinds of informaon. Here are some examples of these styles, and an explanaon of

their meaning.

Code words in text are shown as follows: "Noce that numpysum() does not need a

for loop."

A block of code is set as follows:

def numpysum(n):

a = numpy.arange(n) ** 2

b = numpy.arange(n) ** 3

c = a + b

return c

When we wish to draw your aenon to a parcular part of a code block, the relevant lines

or items are set in bold:

reals = np.isreal(xpoints)

print "Real number?", reals

Real number? [ True True True True False False False False]

Any command-line input or output is wrien as follows:

>>>fromnumpy.testing import rundocs

>>>rundocs('docstringtest.py')

New terms and important words are shown in bold. Words that you see on the screen, in

menus or dialog boxes for example, appear in the text like this: "Clicking on the Next buon

moves you to the next screen."

Preface

[ xv ]

Warnings or important notes appear in a box like this.

Tips and tricks appear like this.

Reader feedback

Feedback from our readers is always welcome. Let us know what you think about this

book—what you liked or disliked. Reader feedback is important for us as it helps us develop

tles that you will really get the most out of.

To send us general feedback, simply e-mail feedback@packtpub.com, and menon the

book's tle in the subject of your message.

If there is a topic that you have experse in and you are interested in either wring or

contribung to a book, see our author guide at www.packtpub.com/authors.

Customer support

Now that you are the proud owner of a Packt book, we have a number of things to help you

to get the most from your purchase.

Downloading the example code

You can download the example code les from your account at http://www.packtpub.

com for all the Packt Publishing books you have purchased. If you purchased this book

elsewhere, you can visit http://www.packtpub.com/support and register to have the

les e-mailed directly to you.

Downloading the color images of this book

We also provide you with a PDF le that has color images of the screenshots/diagrams used

in this book. The color images will help you beer understand the changes in the output.

You can download this le from https://www.packtpub.com/sites/default/files/

downloads/NumpyBeginner'sGuide_Third_Edition_ColorImages.pdf.

Preface

[ xvi ]

Errata

Although we have taken every care to ensure the accuracy of our content, mistakes do happen.

If you nd a mistake in one of our books—maybe a mistake in the text or the code—we

would be grateful if you could report this to us. By doing so, you can save other readers from

frustraon and help us improve subsequent versions of this book. If you nd any errata, please

report them by vising http://www.packtpub.com/submit-errata, selecng your book,

clicking on the Errata Submission Form link, and entering the details of your errata. Once your

errata are veried, your submission will be accepted and the errata will be uploaded to our

website or added to any list of exisng errata under the Errata secon of that tle.

To view the previously submied errata, go to https://www.packtpub.com/books/

content/support and enter the name of the book in the search eld. The required

informaon will appear under the Errata secon.

Piracy

Piracy of copyrighted material on the Internet is an ongoing problem across all media.

At Packt, we take the protecon of our copyright and licenses very seriously. If you come

across any illegal copies of our works in any form on the Internet, please provide us with

the locaon address or website name immediately so that we can pursue a remedy.

Please contact us at copyright@packtpub.com with a link to the suspected pirated material.

We appreciate your help in protecng our authors and our ability to bring you

valuable content.

Questions

If you have a problem with any aspect of this book, you can contact us at

questions@packtpub.com, and we will do our best to address the problem.

[ 1 ]

NumPy Quick Start

Let's get started. We will install NumPy and related software on different

operating systems and have a look at some simple code that uses NumPy. This

chapter briefly introduces the IPython interactive shell. SciPy is closely related

to NumPy, so you will see the SciPy name appearing here and there. At the end

of this chapter, you will find pointers on how to find additional information

online if you get stuck or are uncertain about the best way to solve problems.

In this chapter, you will cover the following topics:

Install Python, SciPy, matplotlib, IPython, and NumPy on Windows, Linux,

and Macintosh

Do a short refresher of Python

Write simple NumPy code

Get to know IPython

Browse online documentaon and resources

Python

NumPy is based on Python, so you need to have Python installed. On some operang

systems, Python is already installed. However, you need to check whether the Python version

corresponds with the NumPy version you want to install. There are many implementaons of

Python, including commercial implementaons and distribuons. In this book, we focus on

the standard CPython implementaon, which is guaranteed to be compable with NumPy.

1

NumPy Quick Start

[ 2 ]

Time for action – installing Python on different operating

systems

NumPy has binary installers for Windows, various Linux distribuons, and Mac OS X

at http://sourceforge.net/projects/numpy/files/. There is also a source

distribuon, if you prefer that. You need to have Python 2.4.x or above installed on your

system. We will go through the various steps required to install Python on the following

operang systems:

Debian and Ubuntu: Python might already be installed on Debian and Ubuntu,

but the development headers are usually not. On Debian and Ubuntu, install the

python and python-dev packages with the following commands:

$ [sudo] apt-get install python

$ [sudo] apt-get install python-dev

Windows: The Windows Python installer is available at https://www.python.

org/downloads/. On this website, we can also nd installers for Mac OS X and

source archives for Linux, UNIX, and Mac OS X.

Mac: Python comes preinstalled on Mac OS X. We can also get Python through

MacPorts, Fink, Homebrew, or similar projects.

Install, for instance, the Python 2.7 port by running the following command:

$ [sudo] port install python27

Linear Algebra PACKage (LAPACK) does not need to be present but, if it is,

NumPy will detect it and use it during the installaon phase. It is recommended

that you install LAPACK for serious numerical analysis as it has useful numerical

linear algebra funconality.

What just happened?

We installed Python on Debian, Ubuntu, Windows, and the Mac OS X.

You can download the example code les for all the Packt books you have

purchased from your account at https://www.packtpub.com/. If you

purchased this book elsewhere, you can visit https://www.packtpub.

com/books/content/support and register to have the les e-mailed

directly to you.

Chapter 1

[ 3 ]

The Python help system

Before we start the NumPy introducon, let's take a brief tour of the Python help system,

in case you have forgoen how it works or are not very familiar with it. The Python help

system allows you to look up documentaon from the interacve Python shell. A shell is

an interacve program, which accepts commands and executes them for you.

Time for action – using the Python help system

Depending on your operang system, you can access the Python shell with special

applicaons, usually a terminal of some sort.

1. In such a terminal, type the following command to start a Python shell:

$ python

2. You will get a short message with the Python version and other informaon and the

following prompt:

>>>

Type the following in the prompt:

>>> help()

Another message appears and the prompt changes as follows:

help>

3. If you type, for instance, keywords as the message says, you get a list of keywords.

The topics command gives a list of topics. If you type any of the topic names (such

as LISTS) in the prompt, you get addional informaon about the topic. Typing q

quits the informaon screen. Pressing Ctrl + D together returns you to the normal

Python prompt:

>>>

Pressing Ctrl + D together again ends the Python shell session.

What just happened?

We learned about the Python interacve shell and the Python help system.

NumPy Quick Start

[ 4 ]

Basic arithmetic and variable assignment

In the Time for acon – using the Python help system secon, we used the Python shell to

look up documentaon. We can also use Python as a calculator. By the way, this is just a

refresher, so if you are completely new to Python, I recommend taking some me to learn

the basics. If you put your mind to it, learning basic Python should not take you more than a

couple of weeks.

Time for action – using Python as a calculator

We can use Python as a calculator as follows:

1. In a Python shell, add 2 and 2 as follows:

>>> 2 + 2

4

2. Mulply 2 and 2 as follows:

>>> 2 * 2

4

3. Divide 2 and 2 as follows:

>>> 2/2

1

4. If you have programmed before, you probably know that dividing is a bit tricky since

there are dierent types of dividing. For a calculator, the result is usually adequate,

but the following division may not be what you were expecng:

>>> 3/2

1

We will discuss what this result is about in several later chapters of this book. Take

the cube of 2 as follows:

>>> 2 ** 3

8

What just happened?

We used the Python shell as a calculator and performed addion, mulplicaon, division,

and exponenaon.

Chapter 1

[ 5 ]

Time for action – assigning values to variables

Assigning values to variables in Python works in a similar way to most programming

languages.

1. For instance, assign the value of 2 to a variable named var as follows:

>>> var = 2

>>> var

2

2. We dened the variable and assigned it a value. In this Python code, the type of the

variable is not xed. We can make the variable in to a list, which is a built-in Python

type corresponding to an ordered sequence of values. Assign a list to var as follows:

>>> var = [2, 'spam', 'eggs']

>>> var

[2, 'spam', 'eggs']

We can assign a new value to a list item using its index number (counng starts from

0). Assign a new value to the rst list element:

>>> var

['ham', 'spam', 'eggs']

3. We can also swap values easily. Dene two variables and swap their values:

>>> a = 1

>>> b = 2

>>> a, b = b, a

>>> a

2

>>> b

1

What just happened?

We assigned values to variables and Python list items. This secon is by no means

exhausve; therefore, if you are struggling, please read Appendix B, Addional Online

Resources, to nd recommended Python tutorials.

NumPy Quick Start

[ 6 ]

The print() function

If you haven't programmed in Python for a while or are a Python novice, you may be

confused about the Python 2 versus Python 3 discussions. In a nutshell, the latest version

Python 3 is not backward compable with the older Python 2 because the Python

development team felt that some issues were fundamental and therefore warranted a

radical change. The Python team has commied to maintain Python 2 unl 2020. This may

be problemac for the people who sll depend on Python 2 in some way. The consequence

for the print() funcon is that we have two types of syntax.

Time for action – printing with the print() function

We can print using the print() funcon as follows:

1. The old syntax is as follows:

>>> print 'Hello'

Hello

2. The new Python 3 syntax is as follows:

>>> print('Hello')

Hello

The parentheses are now mandatory in Python 3. In this book, I try to use the

new syntax as much as possible; however, I use Python 2 to be on the safe side. To

enforce the syntax, each Python 2 script with print() calls in this book starts with:

>>> from __future__ import print_function

3. Try to use the old syntax to get the following error message:

>>> print 'Hello'

File "<stdin>", line 1

print 'Hello'

^

SyntaxError: invalid syntax

4. To print a newline, use the following syntax:

>>> print()

5. To print mulple items, separate them with commas:

>>> print(2, 'ham', 'egg')

2 ham egg

Chapter 1

[ 7 ]

6. By default, Python separates the printed values with spaces and prints output to the

screen. You can customize these sengs. Read more about this funcon by typing

the following command:

>>> help(print)

You can exit again by typing q.

What just happened?

We learned about the print() funcon and its relaon to Python 2 and Python 3.

Code comments

Commenng code is a best pracce with the goal of making code clearer for yourself and

other coders (see https://google-styleguide.googlecode.com/svn/trunk/

pyguide.html?showone=Comments#Comments). Usually, companies and other

organizaons have policies regarding code comment such as comment templates. In this

book, I did not comment the code in such a fashion for brevity and because the text in the

book should clarify the code.

Time for action – commenting code

The most basic comment starts with a hash sign and connues unl the end of the line:

1. Comment code with this type of comment as follows:

>>> # Comment from hash to end of line

2. However, if the hash sign is between single or double quotes, then we have a string,

which is an ordered sequence of characters:

>>> astring = '# This is not a comment'

>>> astring

'# This is not a comment'

3. We can also comment mulple lines as a block. This is useful if you want to write a

more detailed descripon of the code. Comment mulple lines as follows:

"""

Chapter 1 of NumPy Beginners Guide.

Another line of comment.

"""

We refer to this type of comment as triple-quoted for obvious reasons.

It also is used to test code. You can read about tesng in Chapter 8, Assuring

Quality with Tesng.

NumPy Quick Start

[ 8 ]

The if statement

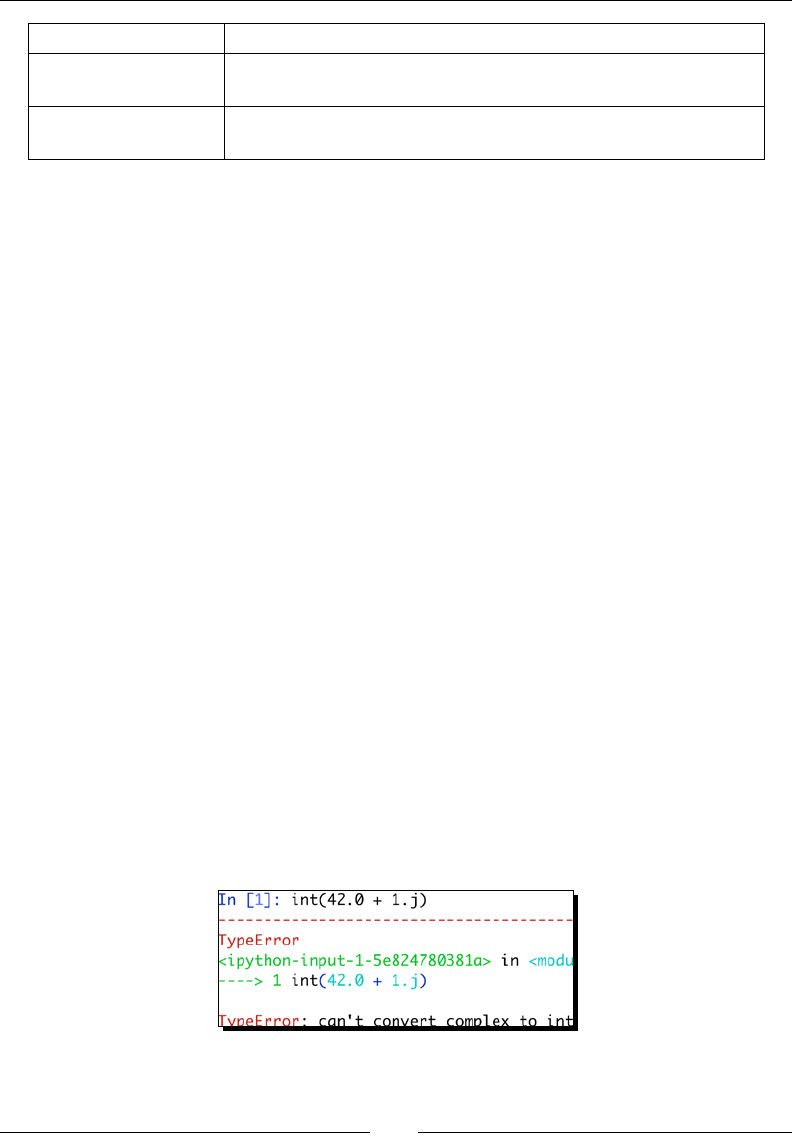

The if statement in Python has a bit dierent syntax to other languages, such as C++ and

Java. The most important dierence is that indentaon maers, which I hope you are

aware of.

Time for action – deciding with the if statement

We can use the if statement in the following ways:

1. Check whether a number is negave as follows:

>>> if 42 < 0:

... print('Negative')

... else:

... print('Not negative')

...

Not negative

In the preceding example, Python decided that 42 is not negave. The else clause

is oponal. The comparison operators are equivalent to the ones in C++, Java, and

similar languages.

2. Python also has a chained branching logic compound statement for mulple tests

similar to the switch statement in C++, Java, and other programming languages.

Decide whether a number is negave, 0, or posive as follows:

>>> a = -42

>>> if a < 0:

... print('Negative')

... elif a == 0:

... print('Zero')

... else:

... print('Positive')

...

Negative

This me, Python decided that 42 is negave.

What just happened?

We learned how to do branching logic in Python.

Chapter 1

[ 9 ]

The for loop

Python has a for statement with the same purpose as the equivalent construct in C++,

Pascal, Java, and other languages. However, the mechanism of looping is a bit dierent.

Time for action – repeating instructions with loops

We can use the for loop in the following ways:

1. Loop over an ordered sequence, such as a list, and print each item as follows:

>>> food = ['ham', 'egg', 'spam']

>>> for snack in food:

... print(snack)

...

ham

egg

spam

2. And remember that, as always, indentaon maers in Python. We loop over a range

of values with the built-in range() or xrange() funcons. The laer funcon is

slightly more ecient in certain cases. Loop over the numbers 1-9 with a step of 2

as follows:

>>> for i in range(1, 9, 2):

... print(i)

...

1

3

5

7

3. The start and step parameter of the range() funcon are oponal with default

values of 1. We can also prematurely end a loop. Loop over the numbers 0-9 and

break out of the loop when you reach 3:

>>> for i in range(9):

... print(i)

... if i == 3:

... print('Three')

... break

NumPy Quick Start

[ 10 ]

...

0

1

2

3

Three

4. The loop stopped at 3 and we did not print the higher numbers. Instead of leaving

the loop, we can also get out of the current iteraon. Print the numbers 0-4,

skipping 3 as follows:

>>> for i in range(5):

... if i == 3:

... print('Three')

... continue

... print(i)

...

0

1

2

Three

4

5. The last line in the loop was not executed when we reached 3 because of the

continue statement. In Python, the for loop can have an else statement

aached to it. Add an else clause as follows:

>>> for i in range(5):

... print(i)

... else:

... print(i, 'in else clause')

...

0

1

2

3

4

(4, 'in else clause')

6. Python executes the code in the else clause last. Python also has a while loop. I

do not use it that much because the for loop is more useful in my opinion.

Chapter 1

[ 11 ]

What just happened?

We learned how to repeat instrucons in Python with loops. This secon included the break

and continue statements, which exit and connue looping.

Python functions

Funcons are callable blocks of code. We call funcons by the name we give them.

Time for action – dening functions

Let's dene the following simple funcon:

1. Print Hello and a given name in the following way:

>>> def print_hello(name):

... print('Hello ' + name)

...

Call the funcon as follows:

>>> print_hello('Ivan')

Hello Ivan

2. Some funcons do not have arguments, or the arguments have default values. Give

the funcon a default argument value as follows:

>>> def print_hello(name='Ivan'):

... print('Hello ' + name)

...

>>> print_hello()

Hello Ivan

3. Usually, we want to return a value. Dene a funcon, which doubles input values

as follows:

>>> def double(number):

... return 2 * number

...

>>> double(3)

6

NumPy Quick Start

[ 12 ]

What just happened?

We learned how to dene funcons. Funcons can have default argument values and

return values.

Python modules

A le containing Python code is called a module. A module can import other modules,

funcons in other modules, and other parts of modules. The lenames of Python modules

end with .py. The name of the module is the same as the lename minus the .py sux.

Time for action – importing modules

Imporng modules can be done in the following manner:

1. If the lename is, for instance, mymodule.py, import it as follows:

>>> import mymodule

2. The standard Python distribuon has a math module. Aer imporng it, list the

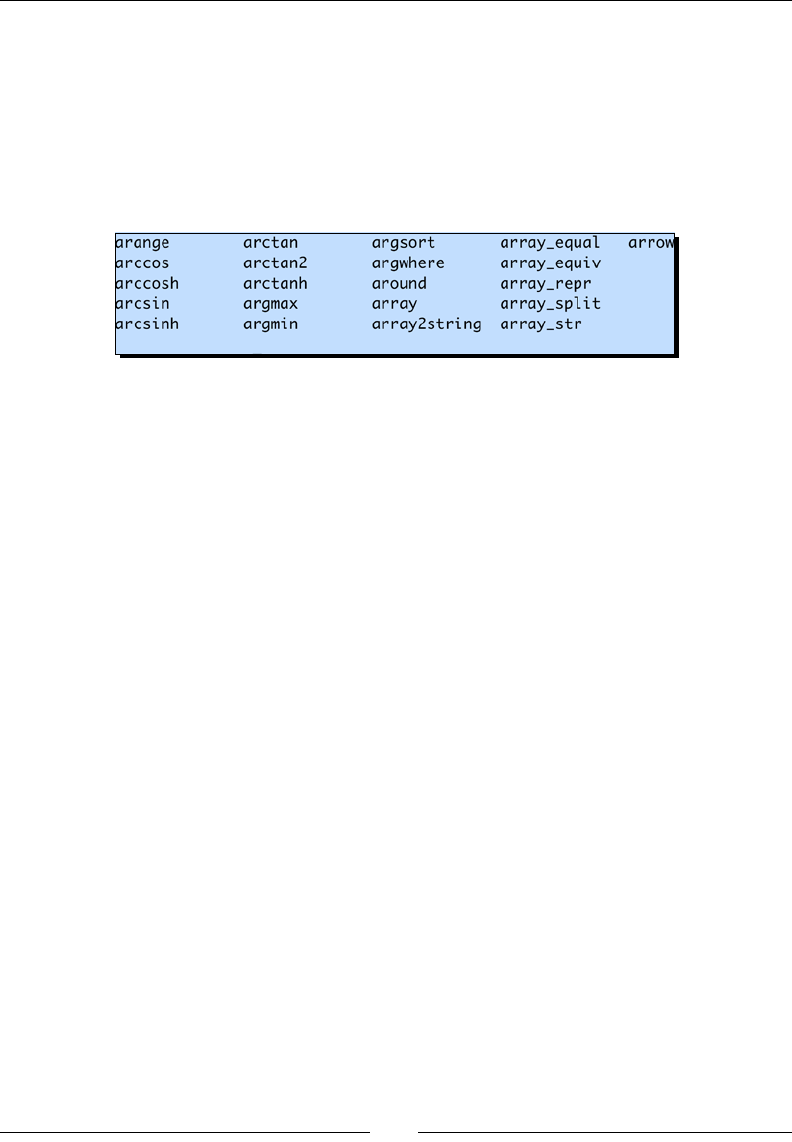

funcons and aributes in the module as follows:

>>> import math

>>> dir(math)

['__doc__', '__file__', '__name__', '__package__', 'acos',

'acosh', 'asin', 'asinh', 'atan', 'atan2', 'atanh', 'ceil',

'copysign', 'cos', 'cosh', 'degrees', 'e', 'erf', 'erfc', 'exp',

'expm1', 'fabs', 'factorial', 'floor', 'fmod', 'frexp', 'fsum',

'gamma', 'hypot', 'isinf', 'isnan', 'ldexp', 'lgamma', 'log',

'log10', 'log1p', 'modf', 'pi', 'pow', 'radians', 'sin', 'sinh',

'sqrt', 'tan', 'tanh', 'trunc']

3. Call the pow() funcon in the math module:

>>> math.pow(2, 3)

8.0

Noce the dot in the syntax. We can also import a funcon directly and call it by its

short name. Import and call the pow() funcon as follows:

>>> from math import pow

>>> pow(2, 3)

8.0

Chapter 1

[ 13 ]

4. Python lets us dene aliases for imported modules and funcons. This is a good me

to introduce the import convenons we are going to use for NumPy and a plong

library we will use a lot:

import numpy as np

import matplotlib.pyplot as plt

What just happened?

We learned about modules, imporng modules, imporng funcons, calling funcons in

modules, and the import convenons of this book. This concludes the Python refresher.

NumPy on Windows

Installing NumPy on Windows is straighorward. You only need to download an installer,

and a wizard will guide you through the installaon steps.

Time for action – installing NumPy, matplotlib, SciPy, and

IPython on Windows

Installing NumPy on Windows is necessary but this is, fortunately, a straighorward task that

we will cover in detail. It is recommended that you install matplotlib, SciPy, and IPython.

However, this is not required to enjoy this book. The acons we will take are as follows:

1. Download a NumPy installer for Windows from the SourceForge website

http://sourceforge.net/projects/numpy/files/.

Choose the appropriate NumPy version according to your Python version. In

the preceding screen shot, we chose numpy-1.9.2-win32-superpack-

python2.7.exe.

NumPy Quick Start

[ 14 ]

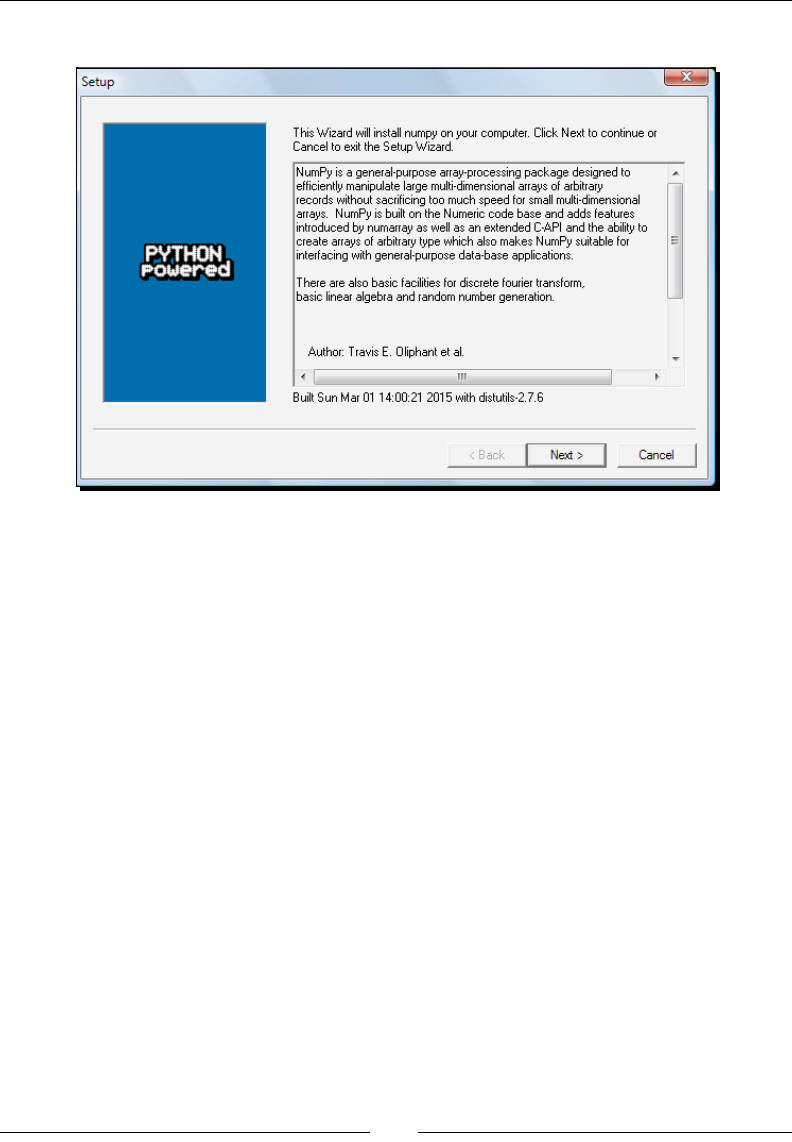

2. Open the EXE installer by double-clicking on it as shown in the following screen shot:

3. Now, we can see a descripon of NumPy and its features. Click on Next.

4. If you have Python installed, it should automacally be detected. If it is not

detected, your path sengs might be wrong. At the end of this chapter, we have

listed resources in case you have problems with installing NumPy.

5. In this example, Python 2.7 was found. Click on Next if Python is found; otherwise,

click on Cancel and install Python (NumPy cannot be installed without Python).

Click on Next. This is the point of no return. Well, kind of, but it is best to make sure

that you are installing to the proper directory and so on and so forth. Now the real

installaon starts. This may take a while.

Install SciPy and matplotlib with the Enthought Canopy distribuon (https://

www.enthought.com/products/canopy/). It might be necessary to put the

msvcp71.dll le in your C:\Windows\system32 directory. You can get it from

http://www.dll-files.com/dllindex/dll-files.shtml?msvcp71

A Windows IPython installer is available on the IPython website (see http://

ipython.org/).

What just happened?

We installed NumPy, SciPy, matplotlib, and IPython on Windows.

Chapter 1

[ 15 ]

NumPy on Linux

Installing NumPy and its related recommended soware on Linux depends on the

distribuon you have. We will discuss how you will install NumPy from the command line,

although you can probably use graphical installers; it depends on your distribuon (distro).

The commands to install matplotlib, SciPy, and IPython are the same—only the package

names are dierent. Installing matplotlib, SciPy, and IPython is recommended, but oponal.

Time for action – installing NumPy, matplotlib, SciPy, and

IPython on Linux

Most Linux distribuons have NumPy packages. We will go through the necessary commands

for some of the most popular Linux distros:

Installing NumPy on Red Hat: Run the following instrucons from the

command line:

$ yum install python-numpy

Installing NumPy on Mandriva: To install NumPy on Mandriva, run the following

command line instrucon:

$ urpmi python-numpy

Installing NumPy on Gentoo: To install NumPy on Gentoo, run the following

command line instrucon:

$ [sudo] emerge numpy

Installing NumPy on Debian and Ubuntu: On Debian or Ubuntu, type the following

on the command line:

$ [sudo] apt-get install python-numpy

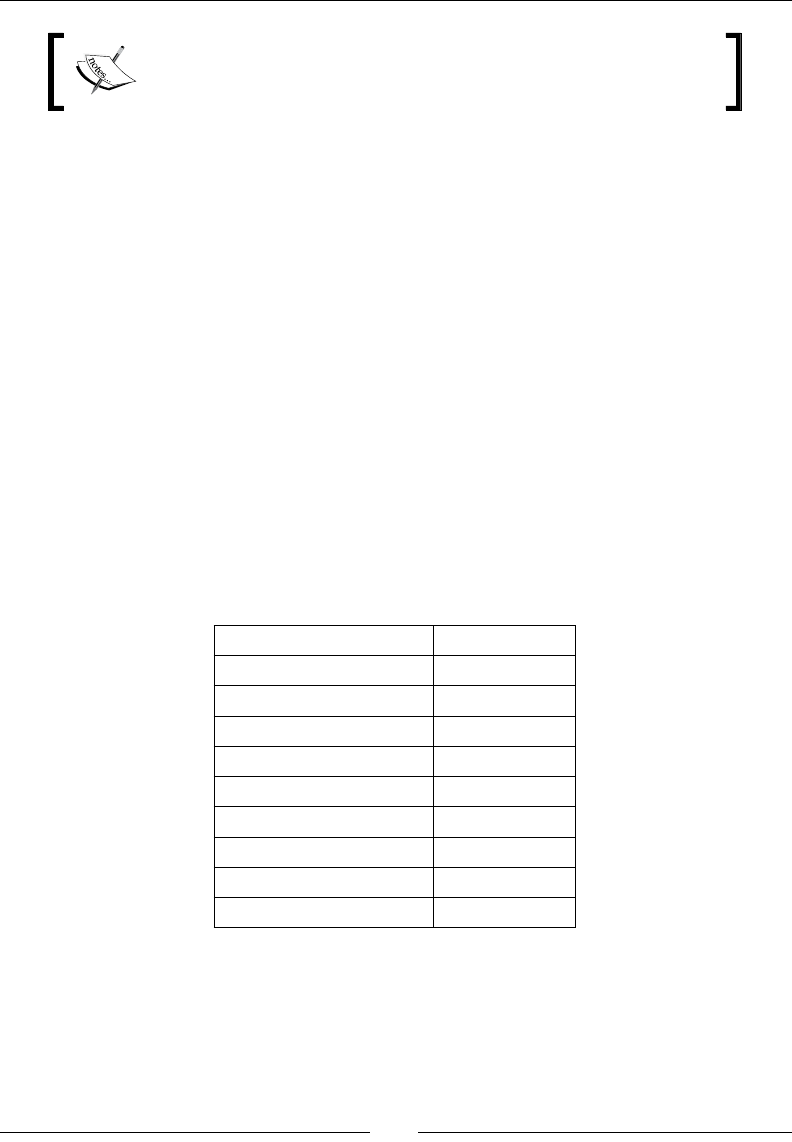

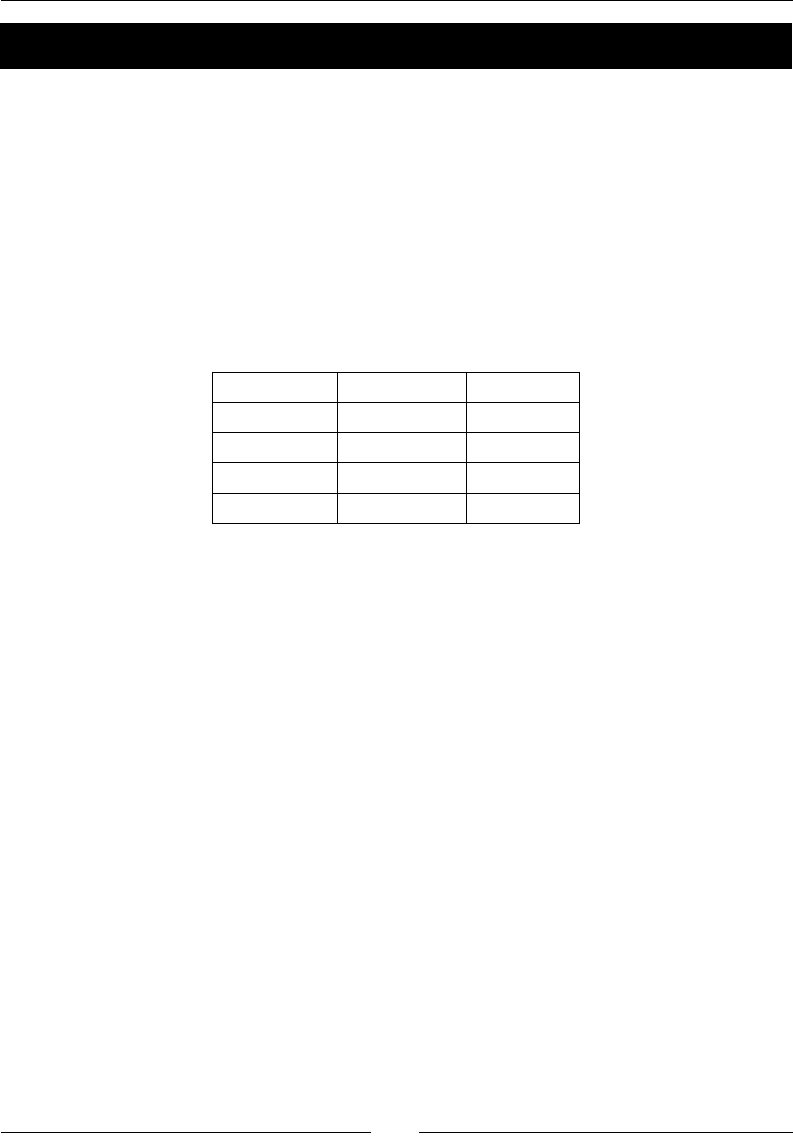

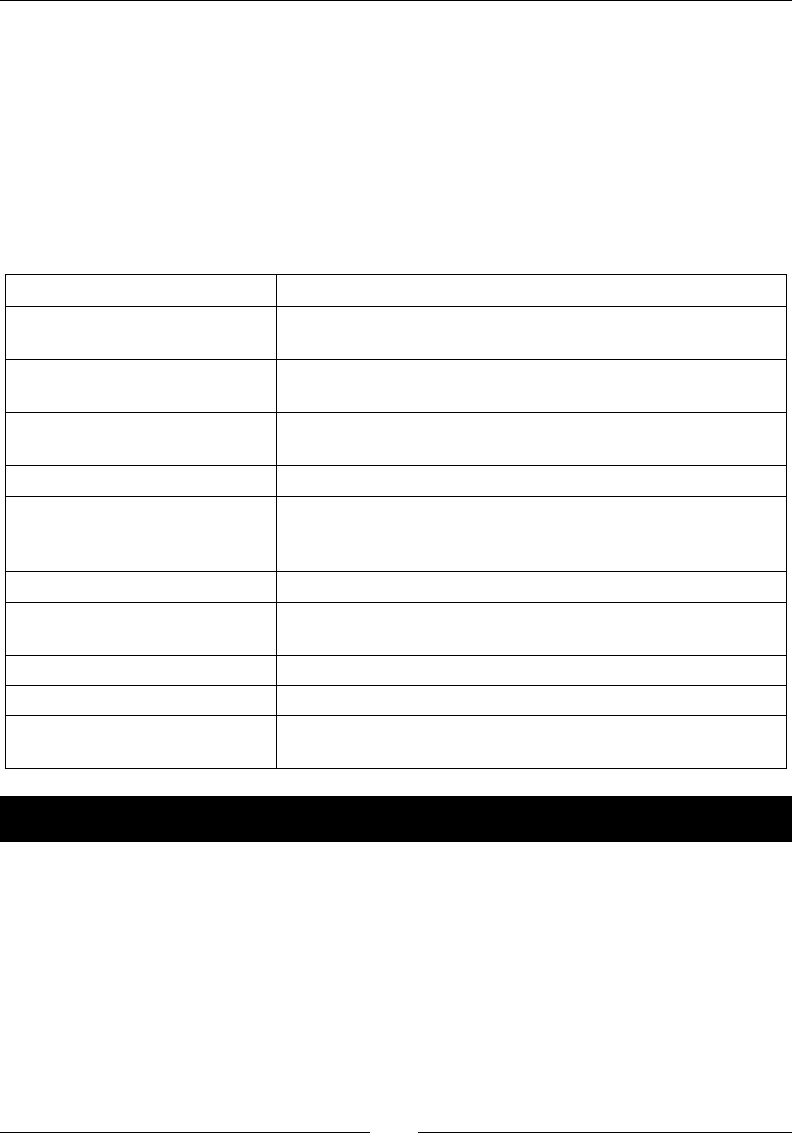

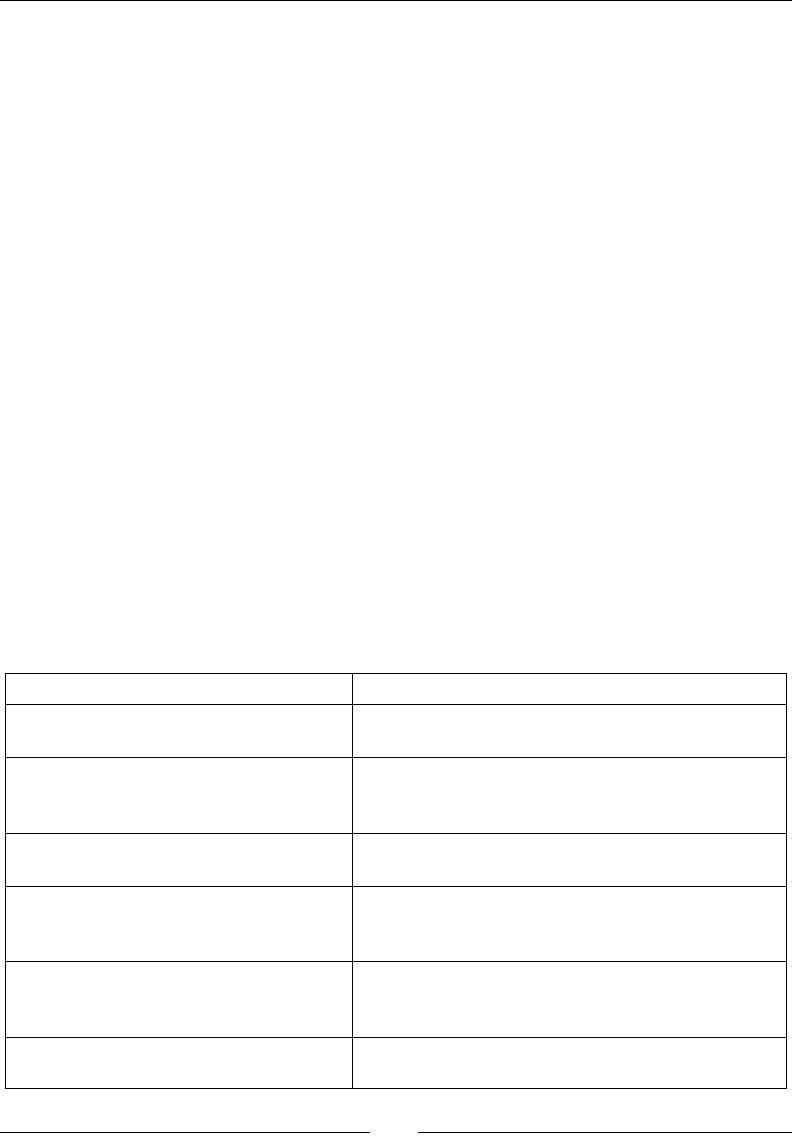

The following table gives an overview of the Linux distribuons and the

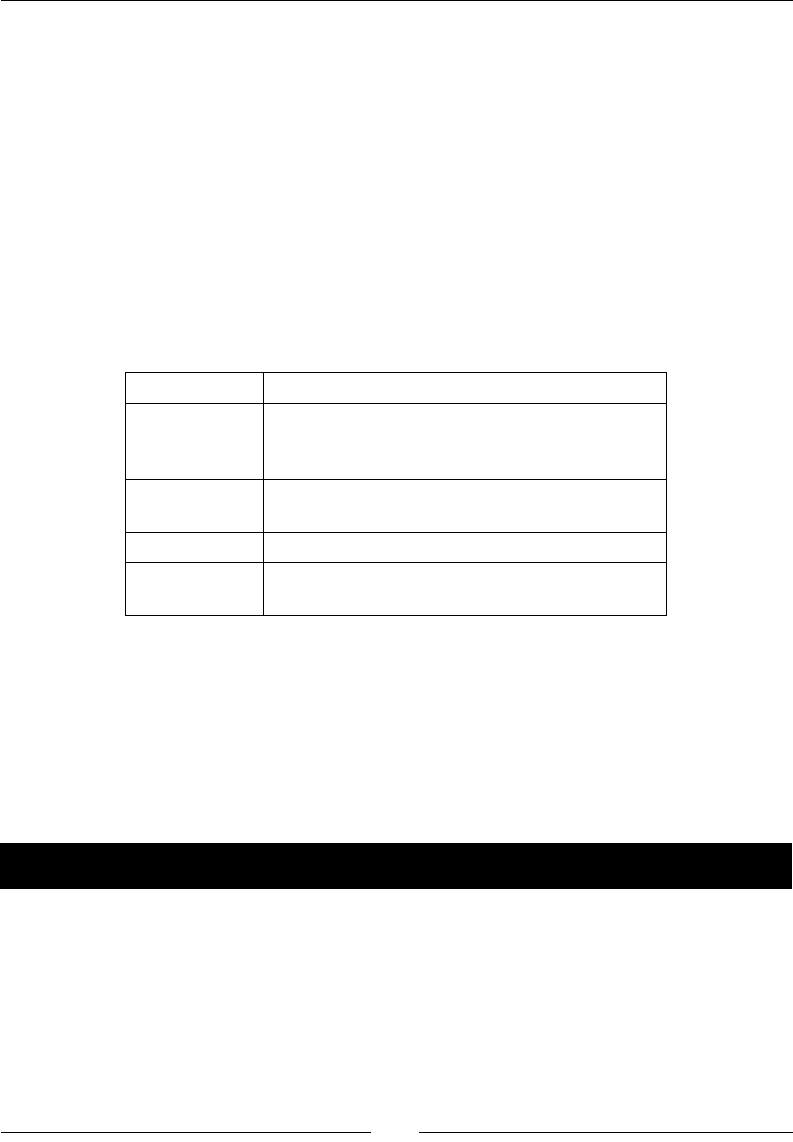

corresponding package names for NumPy, SciPy, matplotlib, and IPython:

Linux distribution NumPy SciPy matplotlib IPython

Arch Linux python-numpy python-

scipy

python-

matplotlib

ipython

Debian python-numpy python-

scipy

python-

matplotlib

ipython

Fedora numpy python-

scipy

python-

matplotlib

ipython

Gentoo dev-python/

numpy

scipy matplotlib ipython

NumPy Quick Start

[ 16 ]

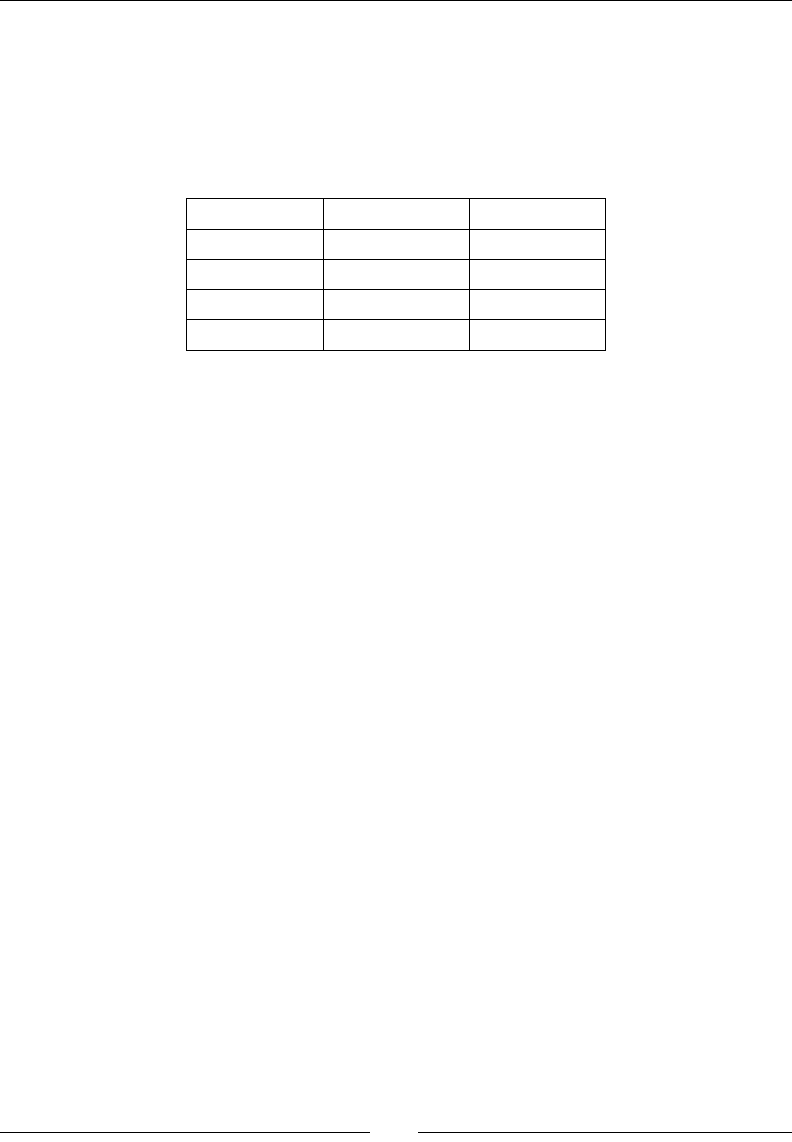

Linux distribution NumPy SciPy matplotlib IPython

OpenSUSE python-numpy,

python-numpy-

devel

python-

scipy

python-

matplotlib

ipython

Slackware numpy scipy matplotlib ipython

NumPy on Mac OS X

You can install NumPy, matplotlib, and SciPy on the Mac OS X with a GUI installer (not

possible for all versions) or from the command line with a port manager such as MacPorts,

Homebrew, or Fink, depending on your preference. You can also install using a script from

https://github.com/fonnesbeck/ScipySuperpack.

Time for action – installing NumPy, SciPy, matplotlib, and

IPython with MacPorts or Fink

Alternavely, we can install NumPy, SciPy, matplotlib, and IPython through the MacPorts

route or with Fink. The following installaon steps show how to install all these packages:

Installing with MacPorts: Type the following command:

$ [sudo] port install py-numpy py-scipy py-matplotlib py-ipython

Installing with Fink: Fink also has packages for NumPy—scipy-core-py24, scipy-

core-py25, and scipy-core-py26. The SciPy packages are scipy-py24, scipy-

py25 and scipy-py26. We can install NumPy and the addional recommended

packages, referring to this book on Python 2.7, using the following command:

$ fink install scipy-core-py27 scipy-py27 matplotlib-py27

What just happened?

We installed NumPy and the addional recommended soware on Mac OS X with

MacPorts and Fink.

Building from source

We can retrieve the source code for NumPy with git as follows:

$ git clone git://github.com/numpy/numpy.git numpy

Alternavely, download the source from http://sourceforge.net/projects/numpy/

files/.

Chapter 1

[ 17 ]

Install in /usr/local with the following command:

$ python setup.py build

$ [sudo] python setup.py install --prefix=/usr/local

To build, we need a C compiler such as GCC and the Python header les in the python-dev

or python-devel packages.

Arrays

Aer going through the installaon of NumPy, it's me to have a look at NumPy arrays.

NumPy arrays are more ecient than Python lists when it comes to numerical operaons.

NumPy code requires less explicit loops than the equivalent Python code.

Time for action – adding vectors

Imagine that we want to add two vectors called a and b (see https://www.khanacademy.

org/science/physics/one-dimensional-motion/displacement-velocity-

time/v/introduction-to-vectors-and-scalars). Vector is used here in the

mathemacal sense meaning a one-dimensional array. We will learn in Chapter 5, Working

with Matrices and ufuncs, about specialized NumPy arrays, which represent matrices. Vector

a holds the squares of integers 0 to n, for instance, if n is equal to 3, then a is equal to (0,1,

4). Vector b holds the cubes of integers 0 to n, so if n is equal to 3, then b is equal to (0,1,

8). How will you do that using plain Python? Aer we come up with a soluon, we will

compare it to the NumPy equivalent.

1. Adding vectors using pure Python: The following funcon solves the vector addion

problem using pure Python without NumPy:

def pythonsum(n):

a = range(n)

b = range(n)

c = []

for i in range(len(a)):

a[i] = i ** 2

b[i] = i ** 3

c.append(a[i] + b[i])

return c

NumPy Quick Start

[ 18 ]

Downloading the example code les

You can download the example code les from your account at

http://www.packtpub.com for all the Packt Publishing books you

have purchased. If you purchased this book elsewhere, you can visit

http://www.packtpub.com/support and register to have the

les e-mailed directly to you.

2. Adding vectors using NumPy: Following is a funcon that achieves the same result

with NumPy:

def numpysum(n):

a = np.arange(n) ** 2

b = np.arange(n) ** 3

c = a + b

return c

Noce that numpysum() does not need a for loop. Also, we used the arange() funcon

from NumPy that creates a NumPy array for us with integers 0 to n. The arange() funcon

was imported; that is why it is prexed with numpy (actually, it is customary to abbreviate it

via an alias to np).

Now comes the fun part. The preface menons that NumPy is faster when it comes to

array operaons. How much faster is NumPy, though? The following program will show us

by measuring the elapsed me, in microseconds, for the numpysum() and pythonsum()

funcons. It also prints the last two elements of the vector sum. Let's check that we get the

same answers by using Python and NumPy:

#!/usr/bin/env/python

from __future__ import print_function

import sys

from datetime import datetime

import numpy as np

"""

Chapter 1 of NumPy Beginners Guide.

This program demonstrates vector addition the Python way.

Run from the command line as follows

python vectorsum.py n

where n is an integer that specifies the size of the vectors.

Chapter 1

[ 19 ]

The first vector to be added contains the squares of 0 up to n.

The second vector contains the cubes of 0 up to n.

The program prints the last 2 elements of the sum and the elapsed

time.

"""

def numpysum(n):

a = np.arange(n) ** 2

b = np.arange(n) ** 3

c = a + b

return c

def pythonsum(n):

a = range(n)

b = range(n)

c = []

for i in range(len(a)):

a[i] = i ** 2

b[i] = i ** 3

c.append(a[i] + b[i])

return c

size = int(sys.argv[1])

start = datetime.now()

c = pythonsum(size)

delta = datetime.now() - start

print("The last 2 elements of the sum", c[-2:])

print("PythonSum elapsed time in microseconds", delta.microseconds)

start = datetime.now()

c = numpysum(size)

delta = datetime.now() - start

print("The last 2 elements of the sum", c[-2:])

print("NumPySum elapsed time in microseconds", delta.microseconds)

NumPy Quick Start

[ 20 ]

The output of the program for 1000, 2000, and 3000 vector elements is as follows:

$ python vectorsum.py 1000

The last 2 elements of the sum [995007996, 998001000]

PythonSum elapsed time in microseconds 707

The last 2 elements of the sum [995007996 998001000]

NumPySum elapsed time in microseconds 171

$ python vectorsum.py 2000

The last 2 elements of the sum [7980015996, 7992002000]

PythonSum elapsed time in microseconds 1420

The last 2 elements of the sum [7980015996 7992002000]

NumPySum elapsed time in microseconds 168

$ python vectorsum.py 4000

The last 2 elements of the sum [63920031996, 63968004000]

PythonSum elapsed time in microseconds 2829

The last 2 elements of the sum [63920031996 63968004000]

NumPySum elapsed time in microseconds 274

What just happened?

Clearly, NumPy is much faster than the equivalent normal Python code. One thing is certain,

we get the same results whether we use NumPy or not. However, the result printed diers

in representaon. Noce that the result from the numpysum() funcon does not have any

commas. How come? Obviously, we are not dealing with a Python list but with a NumPy

array. It was menoned in the Preface that NumPy arrays are specialized data structures for

numerical data. We will learn more about NumPy arrays in the next chapter.

Pop quiz – Functioning of the arange() function

Q1. What does arange(5) do?

1. Creates a Python list of 5 elements with the values 1-5.

2. Creates a Python list of 5 elements with the values 0-4.

3. Creates a NumPy array with the values 1-5.

4. Creates a NumPy array with the values 0-4.

5. None of the above.

Chapter 1

[ 21 ]

Have a go hero – continue the analysis

The program we used to compare the speed of NumPy and regular Python is not very

scienc. We should at least repeat each measurement a couple of mes. It will be nice to

be able to calculate some stascs such as average mes. Also, you might want to show

plots of the measurements to friends and colleagues.

Hints to help can be found in the online documentaon and the resources listed

at the end of this chapter. NumPy has stascal funcons that can calculate

averages for you. I recommend using matplotlib to produce plots. Chapter 9,

Plong with matplotlib, gives a quick overview of matplotlib.

IPython – an interactive shell

Sciensts and engineers are used to experiment. Sciensts created IPython with

experimentaon in mind. Many view the interacve environment that IPython provides

as a direct answer to MATLAB, Mathemaca, and Maple. You can nd more informaon,

including installaon instrucons, at http://ipython.org/.

IPython is free, open source, and available for Linux, UNIX, Mac OS X, and Windows. The

IPython authors only request that you cite IPython in any scienc work that uses IPython.

The following is a list of the basic IPython features:

Tab compleon

History mechanism

Inline eding

Ability to call external Python scripts with %run

Access to system commands

Pylab switch

Access to Python debugger and proler

The Pylab switch imports all the SciPy, NumPy, and matplotlib packages. Without this switch,

we will have to import every package we need ourselves.

NumPy Quick Start

[ 22 ]

All we need to do is enter the following instrucon on the command line:

$ ipython --pylab

IPython 2.4.1 -- An enhanced Interactive Python.

? -> Introduction and overview of IPython's features.

%quickref -> Quick reference.

help -> Python's own help system.

object? -> Details about 'object', use 'object??' for extra details.

Using matplotlib backend: MacOSX

In [1]: quit()

The quit()command or Ctrl + D quits the IPython shell. We may want to be able to go back

to our experiments. In IPython, it is easy to save a session for later:

In [1]: %logstart

Activating auto-logging. Current session state plus future input saved.

Filename : ipython_log.py

Mode : rotate

Output logging : False

Raw input log : False

Timestamping : False

State : active

Let's say we have the vector addion program that we made in the current directory. Run

the script as follows:

In [1]: ls

README vectorsum.py

In [2]: %run -i vectorsum.py 1000

As you probably remember, 1000 species the number of elements in a vector. The -d

switch of %run starts an ipdb debugger with c the script is started. n steps through the

code. Typing quit at the ipdb prompt exits the debugger:

In [2]: %run -d vectorsum.py 1000

*** Blank or comment

*** Blank or comment

Breakpoint 1 at: /Users/…/vectorsum.py:3

Chapter 1

[ 23 ]

Enter c at the ipdb> prompt to start your script.

><string>(1)<module>()

ipdb> c

> /Users/…/vectorsum.py(3)<module>()

2

1---> 3 import sys

4 from datetime import datetime

ipdb> n

>

/Users/…/vectorsum.py(4)<module>()

1 3 import sys

----> 4 from datetime import datetime

5 import numpy

ipdb> n

> /Users/…/vectorsum.py(5)<module>()

4 from datetime import datetime

----> 5 import numpy

6

ipdb> quit

We can also prole our script by passing the -p opon to %run:

In [4]: %run -p vectorsum.py 1000

1058 function calls (1054 primitive calls) in 0.002 CPU seconds

Ordered by: internal time

ncalls tottime percall cumtime percall filename:lineno(function)

1 0.001 0.001 0.001 0.001 vectorsum.py:28(pythonsum)

1 0.001 0.001 0.002 0.002 {execfile}

1000 0.000 0.0000.0000.000 {method 'append' of 'list' objects}

1 0.000 0.000 0.002 0.002 vectorsum.py:3(<module>)

1 0.000 0.0000.0000.000 vectorsum.py:21(numpysum)

3 0.000 0.0000.0000.000 {range}

1 0.000 0.0000.0000.000 arrayprint.py:175(_array2string)

3/1 0.000 0.0000.0000.000 arrayprint.py:246(array2string)