Oracle Database Administrator’s Guide Administrator 12c Release 1(12.1)

Oracle%20Database%20Administrator%20Guide%2012c%20Release%201(12.1)

Oracle%20Database%20Administrator's%20Guide%2012c

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 1406 [warning: Documents this large are best viewed by clicking the View PDF Link!]

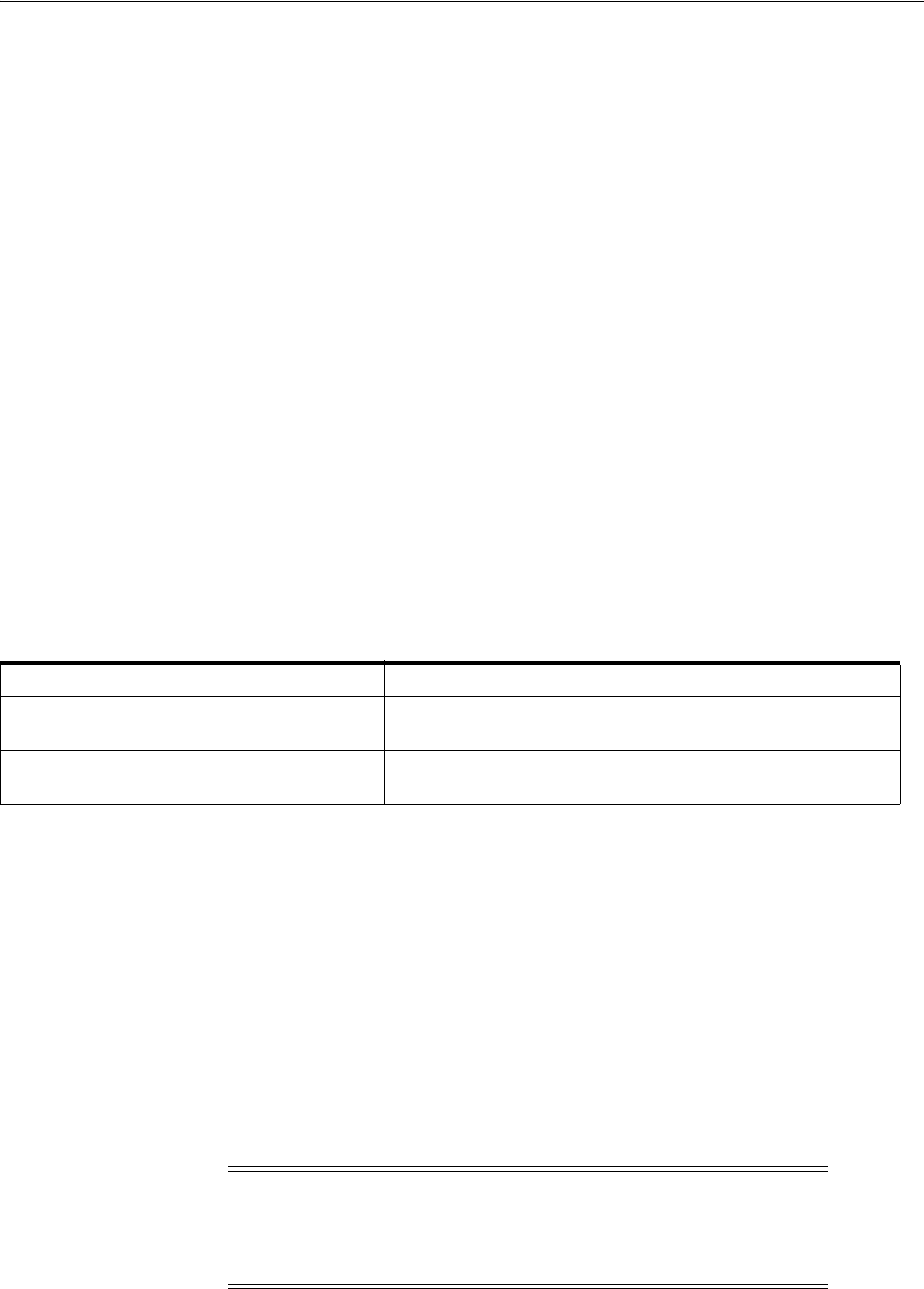

- Contents

- List of Figures

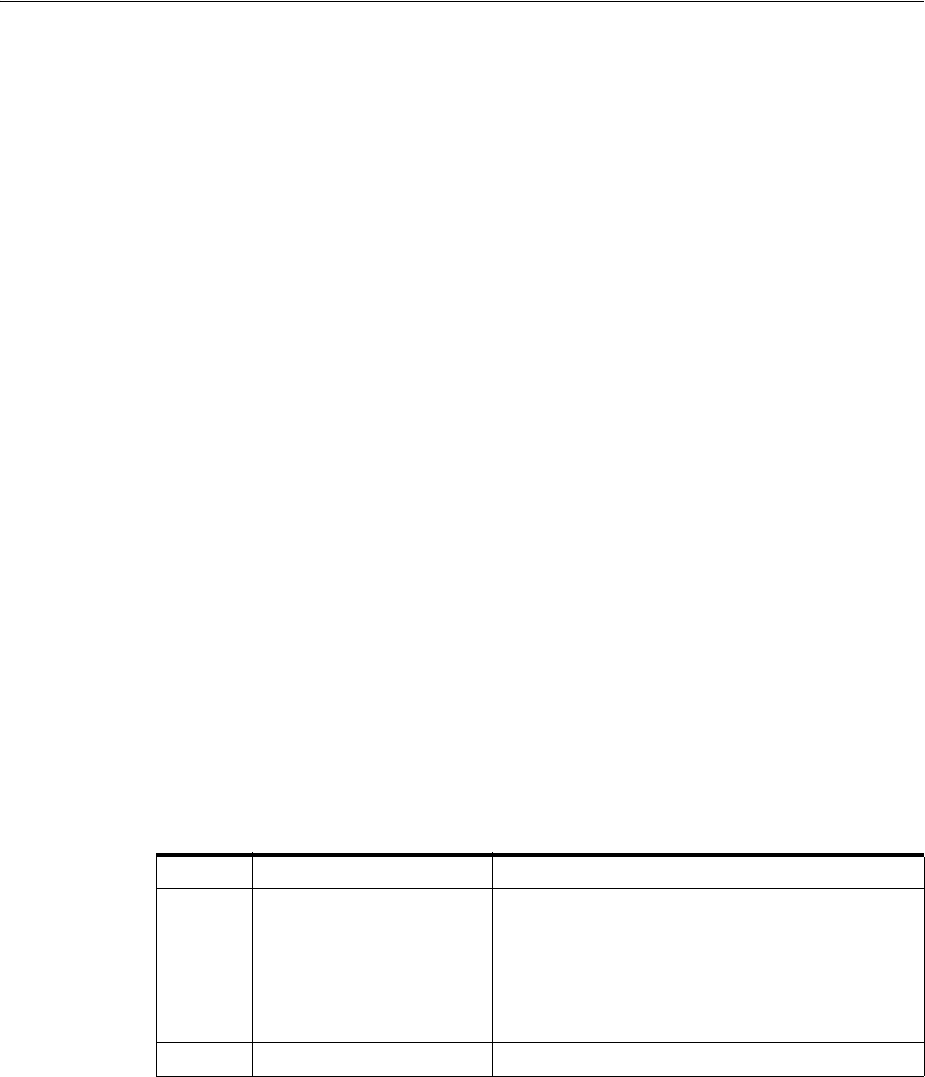

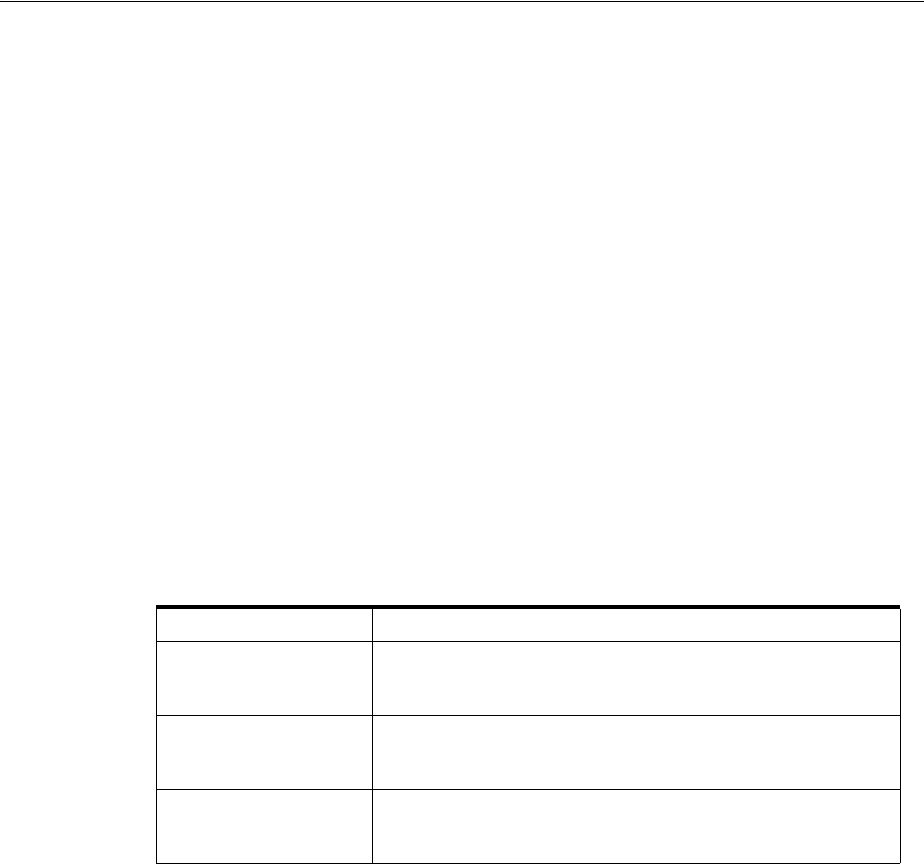

- List of Tables

- Preface

- Changes in This Release for Oracle Database Administrator's Guide

- Part I Basic Database Administration

- 1 Getting Started with Database Administration

- Types of Oracle Database Users

- Tasks of a Database Administrator

- Task 1: Evaluate the Database Server Hardware

- Task 2: Install the Oracle Database Software

- Task 3: Plan the Database

- Task 4: Create and Open the Database

- Task 5: Back Up the Database

- Task 6: Enroll System Users

- Task 7: Implement the Database Design

- Task 8: Back Up the Fully Functional Database

- Task 9: Tune Database Performance

- Task 10: Download and Install Patches

- Task 11: Roll Out to Additional Hosts

- Submitting Commands and SQL to the Database

- Identifying Your Oracle Database Software Release

- About Database Administrator Security and Privileges

- Database Administrator Authentication

- Creating and Maintaining a Database Password File

- Data Utilities

- 2 Creating and Configuring an Oracle Database

- About Creating an Oracle Database

- Creating a Database with DBCA

- Creating a Database with the CREATE DATABASE Statement

- Step 1: Specify an Instance Identifier (SID)

- Step 2: Ensure That the Required Environment Variables Are Set

- Step 3: Choose a Database Administrator Authentication Method

- Step 4: Create the Initialization Parameter File

- Step 5: (Windows Only) Create an Instance

- Step 6: Connect to the Instance

- Step 7: Create a Server Parameter File

- Step 8: Start the Instance

- Step 9: Issue the CREATE DATABASE Statement

- Step 10: Create Additional Tablespaces

- Step 11: Run Scripts to Build Data Dictionary Views

- Step 12: (Optional) Run Scripts to Install Additional Options

- Step 13: Back Up the Database.

- Step 14: (Optional) Enable Automatic Instance Startup

- Specifying CREATE DATABASE Statement Clauses

- Protecting Your Database: Specifying Passwords for Users SYS and SYSTEM

- Creating a Locally Managed SYSTEM Tablespace

- About the SYSAUX Tablespace

- Using Automatic Undo Management: Creating an Undo Tablespace

- Creating a Default Permanent Tablespace

- Creating a Default Temporary Tablespace

- Specifying Oracle Managed Files at Database Creation

- Supporting Bigfile Tablespaces During Database Creation

- Specifying the Database Time Zone and Time Zone File

- Specifying FORCE LOGGING Mode

- Specifying Initialization Parameters

- About Initialization Parameters and Initialization Parameter Files

- Determining the Global Database Name

- Specifying a Fast Recovery Area

- Specifying Control Files

- Specifying Database Block Sizes

- Specifying the Maximum Number of Processes

- Specifying the DDL Lock Timeout

- Specifying the Method of Undo Space Management

- About The COMPATIBLE Initialization Parameter

- Setting the License Parameter

- Managing Initialization Parameters Using a Server Parameter File

- What Is a Server Parameter File?

- Migrating to a Server Parameter File

- Creating a Server Parameter File

- The SPFILE Initialization Parameter

- Changing Initialization Parameter Values

- Clearing Initialization Parameter Values

- Exporting the Server Parameter File

- Backing Up the Server Parameter File

- Recovering a Lost or Damaged Server Parameter File

- Viewing Parameter Settings

- Managing Application Workloads with Database Services

- Considerations After Creating a Database

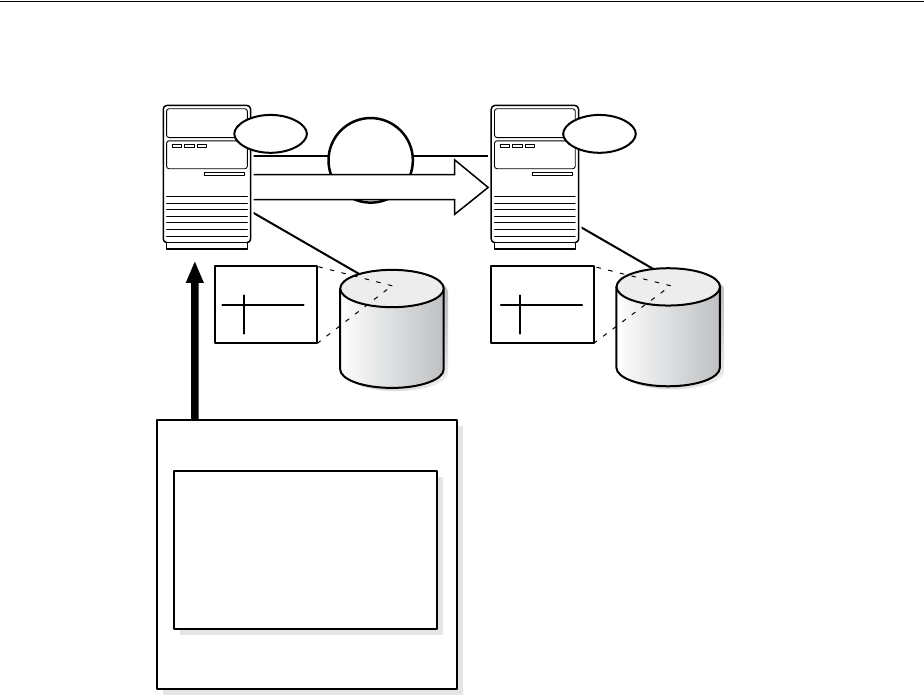

- Cloning a Database with CloneDB

- Dropping a Database

- Database Data Dictionary Views

- Database Configuration Assistant Command Reference for Silent Mode

- 3 Starting Up and Shutting Down

- Starting Up a Database

- About Database Startup Options

- Specifying Initialization Parameters at Startup

- About Automatic Startup of Database Services

- Preparing to Start Up an Instance

- Starting Up an Instance

- Starting an Instance, and Mounting and Opening a Database

- Starting an Instance Without Mounting a Database

- Starting an Instance and Mounting a Database

- Restricting Access to an Instance at Startup

- Forcing an Instance to Start

- Starting an Instance, Mounting a Database, and Starting Complete Media Recovery

- Automatic Database Startup at Operating System Start

- Starting Remote Instances

- Altering Database Availability

- Shutting Down a Database

- Quiescing a Database

- Suspending and Resuming a Database

- Starting Up a Database

- 4 Configuring Automatic Restart of an Oracle Database

- About Oracle Restart

- Oracle Restart Overview

- About Startup Dependencies

- About Starting and Stopping Components with Oracle Restart

- About Starting and Stopping Oracle Restart

- Oracle Restart Configuration

- Oracle Restart Integration with Oracle Data Guard

- Fast Application Notification with Oracle Restart

- Configuring Oracle Restart

- Preparing to Run SRVCTL

- Obtaining Help for SRVCTL

- Adding Components to the Oracle Restart Configuration

- Removing Components from the Oracle Restart Configuration

- Disabling and Enabling Oracle Restart Management for a Component

- Viewing Component Status

- Viewing the Oracle Restart Configuration for a Component

- Modifying the Oracle Restart Configuration for a Component

- Managing Environment Variables in the Oracle Restart Configuration

- Creating and Deleting Database Services with SRVCTL

- Enabling FAN Events in an Oracle Restart Environment

- Automating the Failover of Connections Between Primary and Standby Databases

- Enabling Clients for Fast Connection Failover

- Starting and Stopping Components Managed by Oracle Restart

- Stopping and Restarting Oracle Restart for Maintenance Operations

- SRVCTL Command Reference for Oracle Restart

- CRSCTL Command Reference

- About Oracle Restart

- 5 Managing Processes

- About Dedicated and Shared Server Processes

- About Database Resident Connection Pooling

- Configuring Oracle Database for Shared Server

- Configuring Database Resident Connection Pooling

- About Oracle Database Background Processes

- Managing Processes for Parallel SQL Execution

- Managing Processes for External Procedures

- Terminating Sessions

- Process and Session Data Dictionary Views

- 6 Managing Memory

- About Memory Management

- Memory Architecture Overview

- Using Automatic Memory Management

- Configuring Memory Manually

- Using Automatic Shared Memory Management

- Using Manual Shared Memory Management

- Enabling Manual Shared Memory Management

- Setting the Buffer Cache Initialization Parameters

- Specifying the Shared Pool Size

- Specifying the Large Pool Size

- Specifying the Java Pool Size

- Specifying the Streams Pool Size

- Specifying the Result Cache Maximum Size

- Specifying Miscellaneous SGA Initialization Parameters

- Using Automatic PGA Memory Management

- Using Manual PGA Memory Management

- Using Force Full Database Caching Mode

- Configuring Database Smart Flash Cache

- Using the In-Memory Column Store

- About the IM Column Store

- Initialization Parameters Related to the IM Column Store

- Enabling the IM Column Store for a Database

- Enabling and Disabling Tables for the IM Column Store

- Enabling and Disabling Tablespaces for the IM Column Store

- Enabling and Disabling Materialized Views for the IM Column Store

- Data Pump and the IM Column Store

- Using IM Column Store In Enterprise Manager

- Prerequisites to Using IM Column Store in Enterprise Manager

- Using the In-Memory Column Store Central Home Page to Monitor In-Memory Support for Database Objects

- Specifying In-Memory Details When Creating a Table or Partition

- Viewing or Editing IM Column Store Details of a Table

- Viewing or Editing IM Column Store Details of a Partition

- Specifying IM Column Store Details During Tablespace Creation

- Viewing and Editing IM Column Store Details of a Tablespace

- Specifying IM Column Store Details During Materialized View Creation

- Viewing or Editing IM Column Store Details of a Materialized View

- Memory Management Reference

- 7 Managing Users and Securing the Database

- 8 Monitoring the Database

- 9 Managing Diagnostic Data

- About the Oracle Database Fault Diagnosability Infrastructure

- Investigating, Reporting, and Resolving a Problem

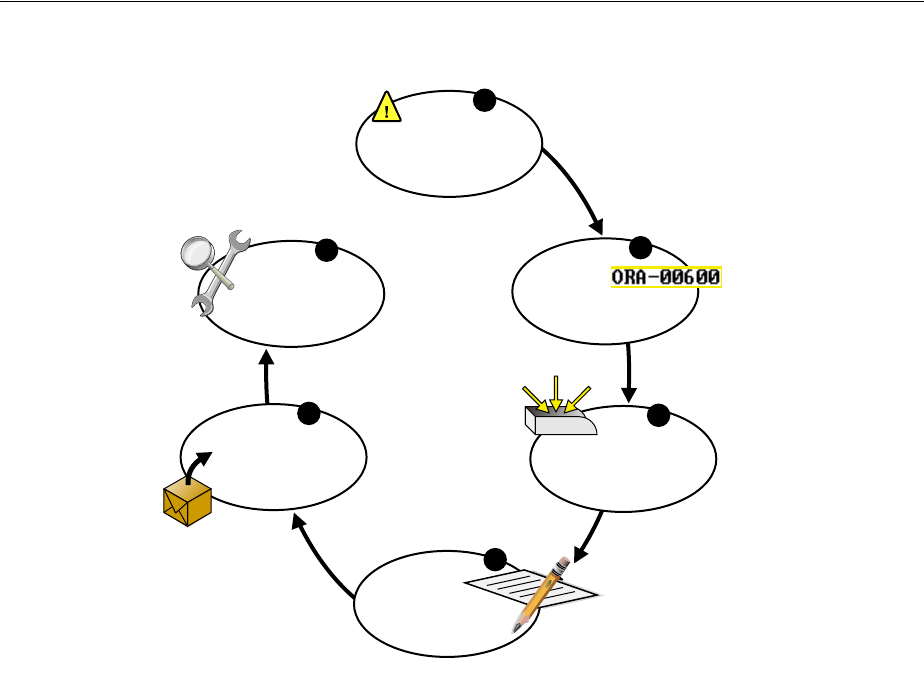

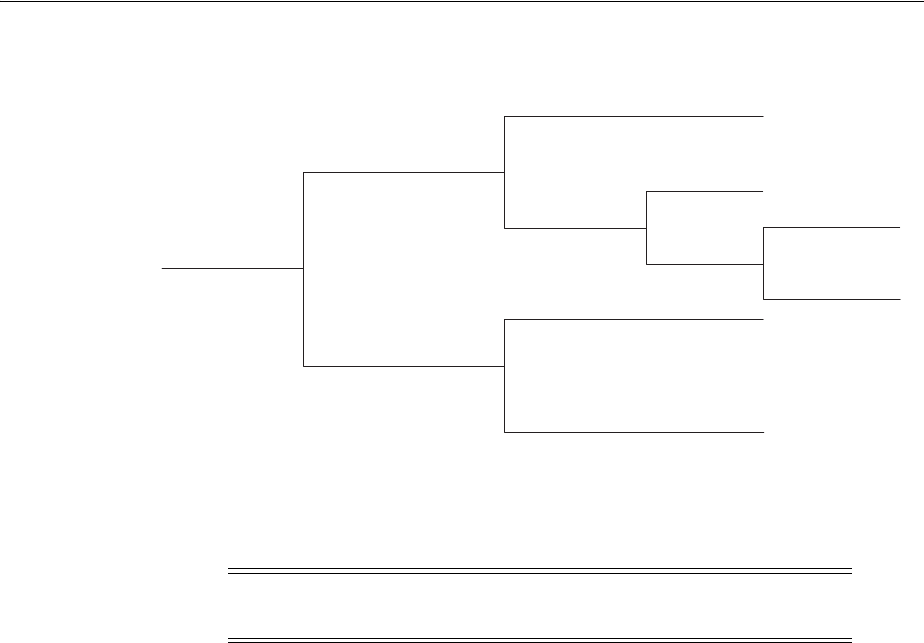

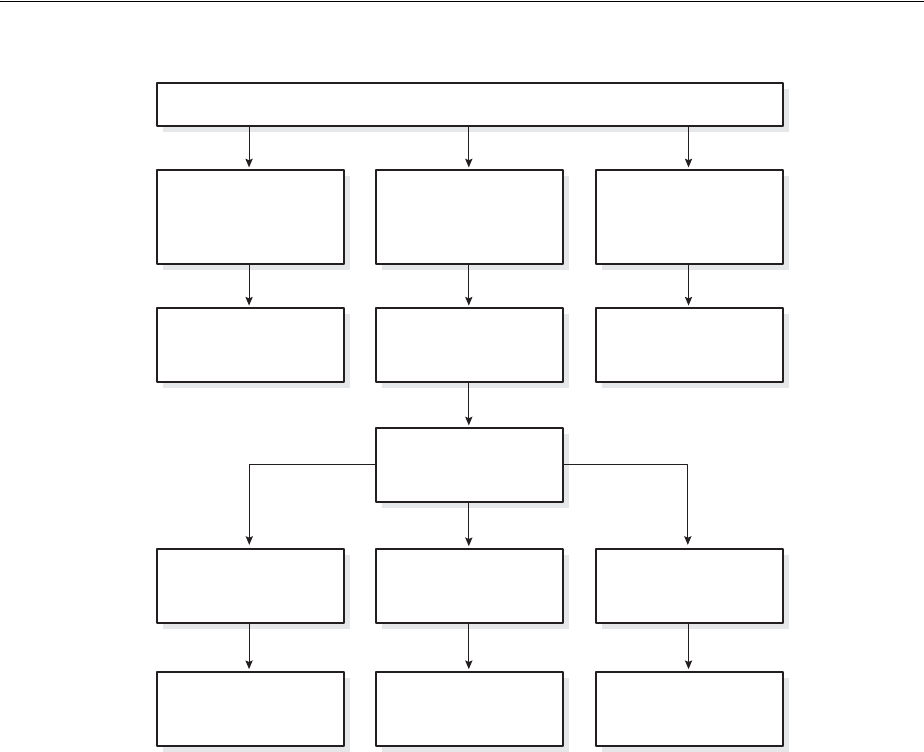

- Roadmap—Investigating, Reporting, and Resolving a Problem

- Task 1: View Critical Error Alerts in Cloud Control

- Task 2: View Problem Details

- Task 3: (Optional) Gather Additional Diagnostic Information

- Task 4: (Optional) Create a Service Request

- Task 5: Package and Upload Diagnostic Data to Oracle Support

- Task 6: Track the Service Request and Implement Any Repairs

- Viewing Problems with the Support Workbench

- Creating a User-Reported Problem

- Viewing the Alert Log

- Finding Trace Files

- Running Health Checks with Health Monitor

- Repairing SQL Failures with the SQL Repair Advisor

- Repairing Data Corruptions with the Data Recovery Advisor

- Creating, Editing, and Uploading Custom Incident Packages

- 1 Getting Started with Database Administration

- Part II Oracle Database Structure and Storage

- 10 Managing Control Files

- 11 Managing the Redo Log

- What Is the Redo Log?

- Planning the Redo Log

- Creating Redo Log Groups and Members

- Relocating and Renaming Redo Log Members

- Dropping Redo Log Groups and Members

- Forcing Log Switches

- Verifying Blocks in Redo Log Files

- Clearing a Redo Log File

- Redo Log Data Dictionary Views

- 12 Managing Archived Redo Log Files

- What Is the Archived Redo Log?

- Choosing Between NOARCHIVELOG and ARCHIVELOG Mode

- Controlling Archiving

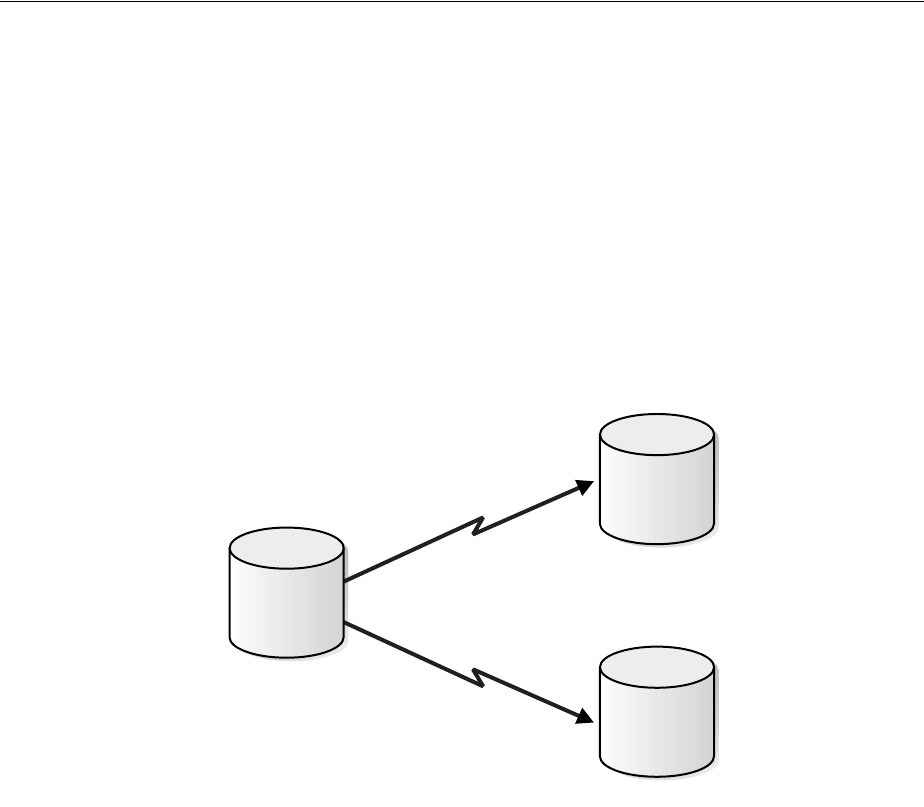

- Specifying Archive Destinations

- About Log Transmission Modes

- Managing Archive Destination Failure

- Controlling Trace Output Generated by the Archivelog Process

- Viewing Information About the Archived Redo Log

- 13 Managing Tablespaces

- Guidelines for Managing Tablespaces

- Creating Tablespaces

- Consider Storing Tablespaces in the In-Memory Column Store

- Specifying Nonstandard Block Sizes for Tablespaces

- Controlling the Writing of Redo Records

- Altering Tablespace Availability

- Using Read-Only Tablespaces

- Altering and Maintaining Tablespaces

- Renaming Tablespaces

- Dropping Tablespaces

- Managing the SYSAUX Tablespace

- Diagnosing and Repairing Locally Managed Tablespace Problems

- Scenario 1: Fixing Bitmap When Allocated Blocks are Marked Free (No Overlap)

- Scenario 2: Dropping a Corrupted Segment

- Scenario 3: Fixing Bitmap Where Overlap is Reported

- Scenario 4: Correcting Media Corruption of Bitmap Blocks

- Scenario 5: Migrating from a Dictionary-Managed to a Locally Managed Tablespace

- Migrating the SYSTEM Tablespace to a Locally Managed Tablespace

- Tablespace Data Dictionary Views

- 14 Managing Data Files and Temp Files

- Guidelines for Managing Data Files

- Creating Data Files and Adding Data Files to a Tablespace

- Changing Data File Size

- Altering Data File Availability

- Renaming and Relocating Data Files

- Dropping Data Files

- Verifying Data Blocks in Data Files

- Copying Files Using the Database Server

- Mapping Files to Physical Devices

- Data Files Data Dictionary Views

- 15 Transporting Data

- About Transporting Data

- Transporting Databases

- Transporting Tablespaces Between Databases

- Transporting Tables, Partitions, or Subpartitions Between Databases

- Converting Data Between Platforms

- Guidelines for Transferring Data Files

- 16 Managing Undo

- 17 Using Oracle Managed Files

- What Are Oracle Managed Files?

- Enabling the Creation and Use of Oracle Managed Files

- Creating Oracle Managed Files

- How Oracle Managed Files Are Named

- Creating Oracle Managed Files at Database Creation

- Specifying Control Files at Database Creation

- Specifying Redo Log Files at Database Creation

- Specifying the SYSTEM and SYSAUX Tablespace Data Files at Database Creation

- Specifying the Undo Tablespace Data File at Database Creation

- Specifying the Default Temporary Tablespace Temp File at Database Creation

- CREATE DATABASE Statement Using Oracle Managed Files: Examples

- Creating Data Files for Tablespaces Using Oracle Managed Files

- Creating Temp Files for Temporary Tablespaces Using Oracle Managed Files

- Creating Control Files Using Oracle Managed Files

- Creating Redo Log Files Using Oracle Managed Files

- Creating Archived Logs Using Oracle Managed Files

- Operation of Oracle Managed Files

- Scenarios for Using Oracle Managed Files

- Part III Schema Objects

- 18 Managing Schema Objects

- Creating Multiple Tables and Views in a Single Operation

- Analyzing Tables, Indexes, and Clusters

- Truncating Tables and Clusters

- Enabling and Disabling Triggers

- Managing Integrity Constraints

- Renaming Schema Objects

- Managing Object Dependencies

- Managing Object Name Resolution

- Switching to a Different Schema

- Managing Editions

- Displaying Information About Schema Objects

- 19 Managing Space for Schema Objects

- Managing Tablespace Alerts

- Managing Resumable Space Allocation

- Reclaiming Unused Space

- Dropping Unused Object Storage

- Understanding Space Usage of Data Types

- Displaying Information About Space Usage for Schema Objects

- Capacity Planning for Database Objects

- 20 Managing Tables

- About Tables

- Guidelines for Managing Tables

- Design Tables Before Creating Them

- Specify the Type of Table to Create

- Specify the Location of Each Table

- Consider Parallelizing Table Creation

- Consider Using NOLOGGING When Creating Tables

- Consider Using Table Compression

- Examples Related to Table Compression

- Compression and Partitioned Tables

- Determining If a Table Is Compressed

- Determining Which Rows Are Compressed

- Changing the Compression Level

- Adding and Dropping Columns in Compressed Tables

- Exporting and Importing Hybrid Columnar Compression Tables

- Restoring a Hybrid Columnar Compression Table

- Notes and Restrictions for Compressed Tables

- Packing Compressed Tables

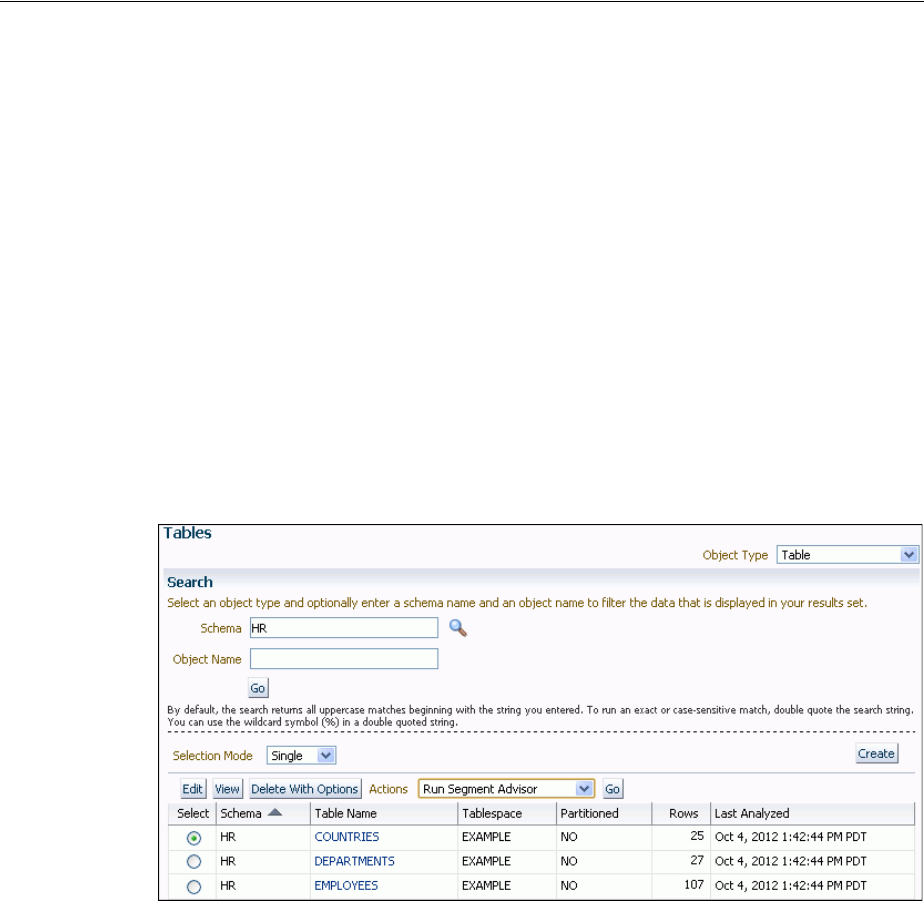

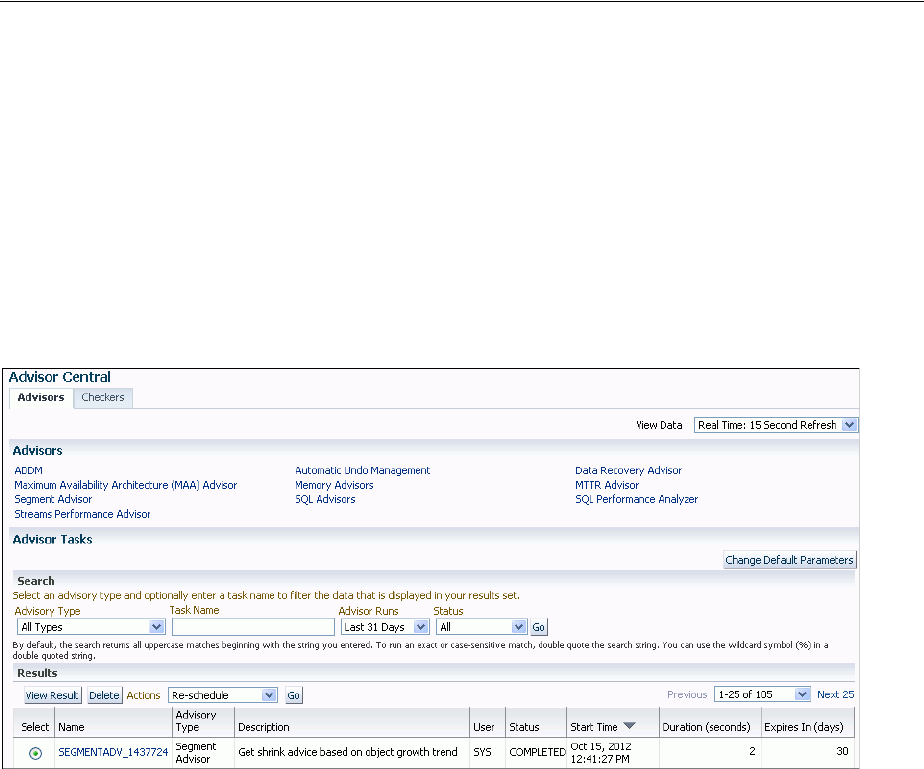

- Managing Table Compression Using Enterprise Manager Cloud Control

- Consider Using Segment-Level and Row-Level Compression Tiering

- Consider Using Attribute-Clustered Tables

- Consider Using Zone Maps

- Consider Storing Tables in the In-Memory Column Store

- Understand Invisible Columns

- Consider Encrypting Columns That Contain Sensitive Data

- Understand Deferred Segment Creation

- Materializing Segments

- Estimate Table Size and Plan Accordingly

- Restrictions to Consider When Creating Tables

- Creating Tables

- Loading Tables

- Methods for Loading Tables

- Improving INSERT Performance with Direct-Path INSERT

- Using Conventional Inserts to Load Tables

- Avoiding Bulk INSERT Failures with DML Error Logging

- Automatically Collecting Statistics on Tables

- Altering Tables

- Reasons for Using the ALTER TABLE Statement

- Altering Physical Attributes of a Table

- Moving a Table to a New Segment or Tablespace

- Manually Allocating Storage for a Table

- Modifying an Existing Column Definition

- Adding Table Columns

- Renaming Table Columns

- Dropping Table Columns

- Placing a Table in Read-Only Mode

- Redefining Tables Online

- Features of Online Table Redefinition

- Performing Online Redefinition with the REDEF_TABLE Procedure

- Performing Online Redefinition with Multiple Procedures in DBMS_REDEFINITION

- Results of the Redefinition Process

- Performing Intermediate Synchronization

- Aborting Online Table Redefinition and Cleaning Up After Errors

- Restrictions for Online Redefinition of Tables

- Online Redefinition of One or More Partitions

- Online Table Redefinition Examples

- Privileges Required for the DBMS_REDEFINITION Package

- Researching and Reversing Erroneous Table Changes

- Recovering Tables Using Oracle Flashback Table

- Dropping Tables

- Using Flashback Drop and Managing the Recycle Bin

- Managing Index-Organized Tables

- Managing External Tables

- Tables Data Dictionary Views

- 21 Managing Indexes

- About Indexes

- Guidelines for Managing Indexes

- Create Indexes After Inserting Table Data

- Index the Correct Tables and Columns

- Order Index Columns for Performance

- Limit the Number of Indexes for Each Table

- Drop Indexes That Are No Longer Required

- Indexes and Deferred Segment Creation

- Estimate Index Size and Set Storage Parameters

- Specify the Tablespace for Each Index

- Consider Parallelizing Index Creation

- Consider Creating Indexes with NOLOGGING

- Understand When to Use Unusable or Invisible Indexes

- Understand When to Create Multiple Indexes on the Same Set of Columns

- Consider Costs and Benefits of Coalescing or Rebuilding Indexes

- Consider Cost Before Disabling or Dropping Constraints

- Consider Using the In-Memory Column Store to Reduce the Number of Indexes

- Creating Indexes

- Creating an Index Explicitly

- Creating a Unique Index Explicitly

- Creating an Index Associated with a Constraint

- Creating a Large Index

- Creating an Index Online

- Creating a Function-Based Index

- Creating a Compressed Index

- Creating an Unusable Index

- Creating an Invisible Index

- Creating Multiple Indexes on the Same Set of Columns

- Altering Indexes

- Monitoring Space Use of Indexes

- Dropping Indexes

- Indexes Data Dictionary Views

- 22 Managing Clusters

- 23 Managing Hash Clusters

- 24 Managing Views, Sequences, and Synonyms

- 25 Repairing Corrupted Data

- 18 Managing Schema Objects

- Part IV Database Resource Management and Task Scheduling

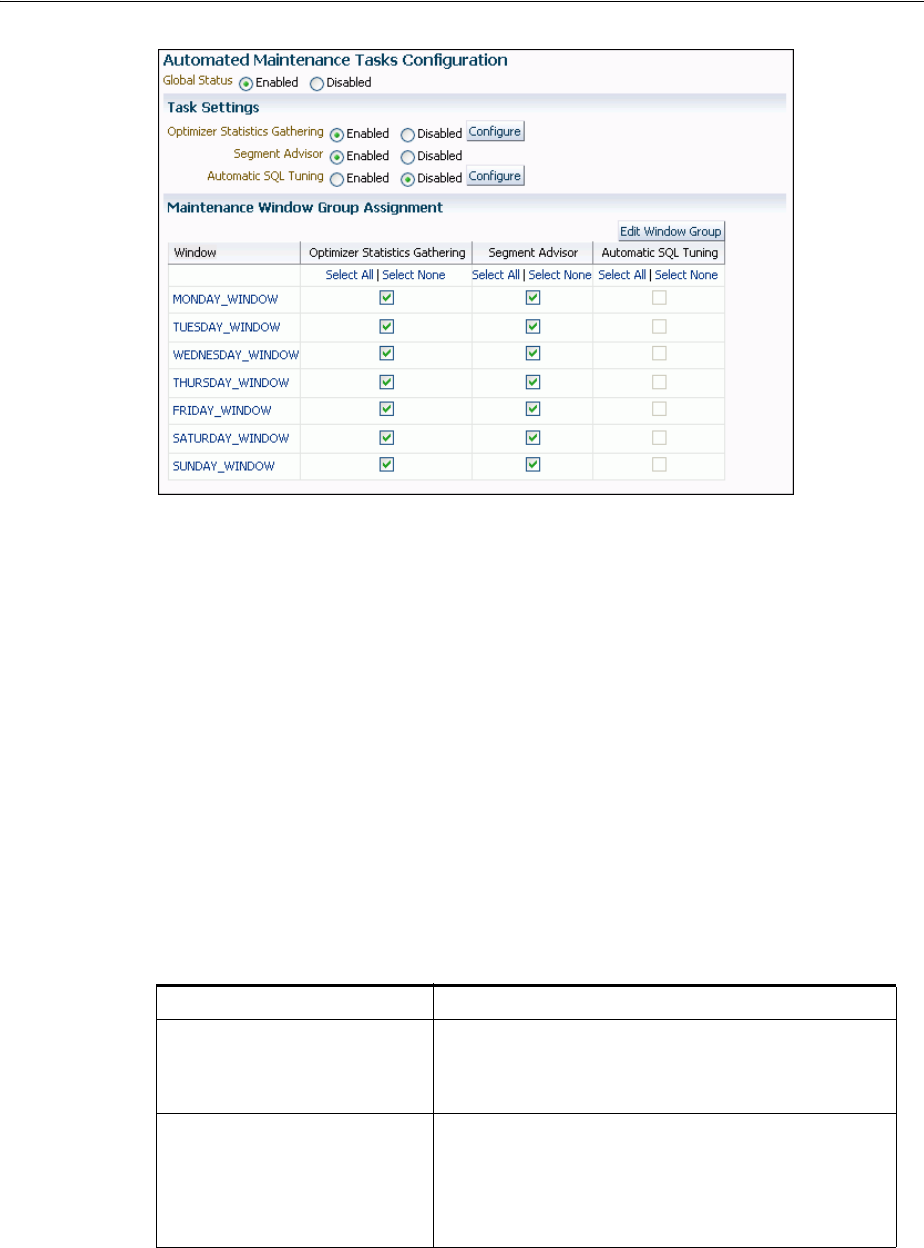

- 26 Managing Automated Database Maintenance Tasks

- 27 Managing Resources with Oracle Database Resource Manager

- About Oracle Database Resource Manager

- Assigning Sessions to Resource Consumer Groups

- The Types of Resources Managed by the Resource Manager

- Creating a Simple Resource Plan

- Creating a Complex Resource Plan

- Enabling Oracle Database Resource Manager and Switching Plans

- Putting It All Together: Oracle Database Resource Manager Examples

- Managing Multiple Database Instances on a Single Server

- Maintaining Consumer Groups, Plans, and Directives

- Viewing Database Resource Manager Configuration and Status

- Monitoring Oracle Database Resource Manager

- Interacting with Operating-System Resource Control

- Oracle Database Resource Manager Reference

- 28 Oracle Scheduler Concepts

- 29 Scheduling Jobs with Oracle Scheduler

- About Scheduler Objects and Their Naming

- Creating, Running, and Managing Jobs

- Job Tasks and Their Procedures

- Creating Jobs

- Overview of Creating Jobs

- Specifying Job Actions, Schedules, Programs, and Styles

- Specifying Scheduler Job Credentials

- Specifying Destinations

- Creating Multiple-Destination Jobs

- Setting Job Arguments

- Setting Additional Job Attributes

- Creating Detached Jobs

- Creating Multiple Jobs in a Single Transaction

- Techniques for External Jobs

- Altering Jobs

- Running Jobs

- Stopping Jobs

- Dropping Jobs

- Disabling Jobs

- Enabling Jobs

- Copying Jobs

- Creating and Managing Programs to Define Jobs

- Creating and Managing Schedules to Define Jobs

- Using Events to Start Jobs

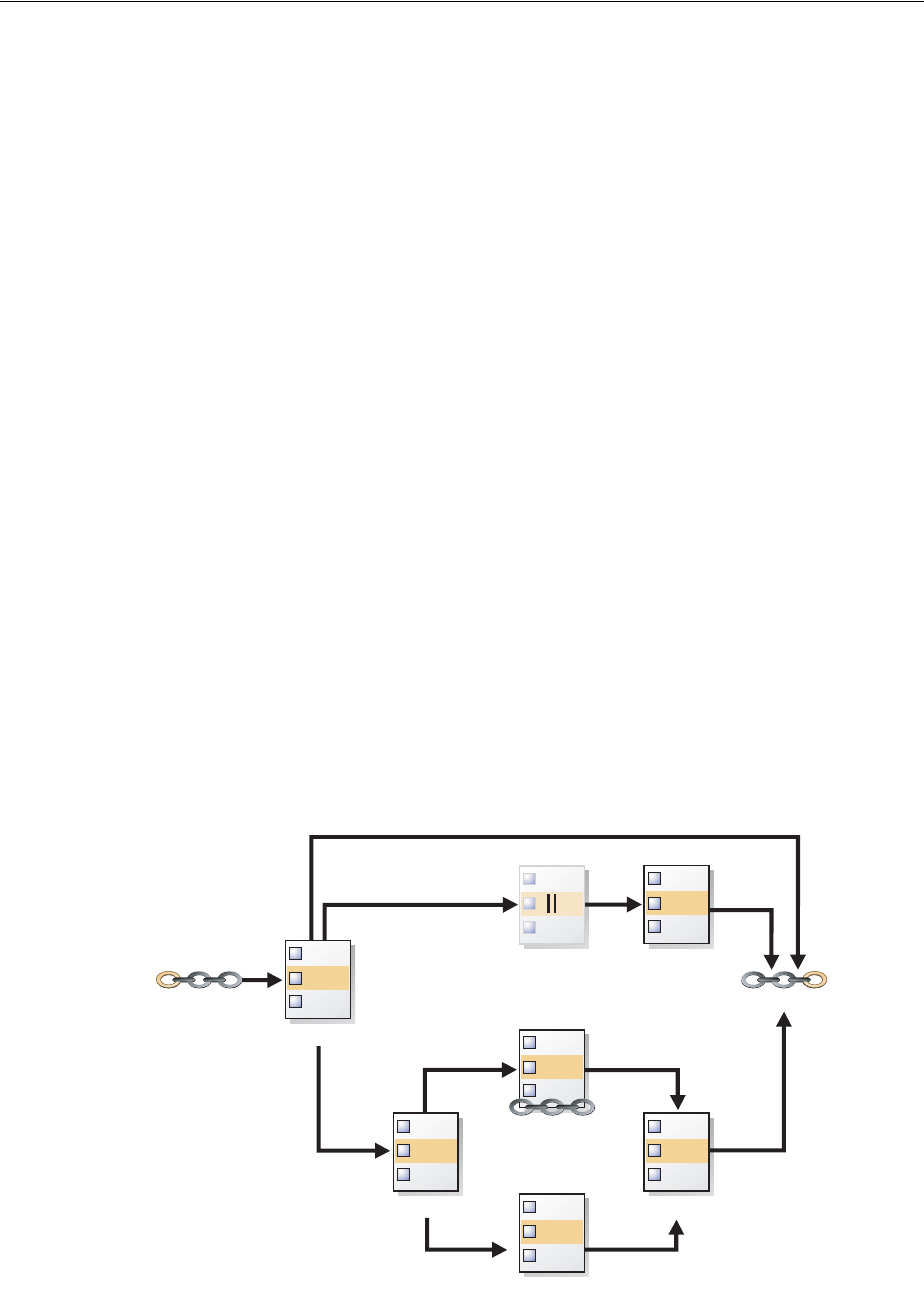

- Creating and Managing Job Chains

- Chain Tasks and Their Procedures

- Creating Chains

- Defining Chain Steps

- Adding Rules to a Chain

- Enabling Chains

- Creating Jobs for Chains

- Dropping Chains

- Running Chains

- Dropping Chain Rules

- Disabling Chains

- Dropping Chain Steps

- Stopping Chains

- Stopping Individual Chain Steps

- Pausing Chains

- Skipping Chain Steps

- Running Part of a Chain

- Monitoring Running Chains

- Handling Stalled Chains

- Prioritizing Jobs

- Monitoring Jobs

- 30 Administering Oracle Scheduler

- Configuring Oracle Scheduler

- Monitoring and Managing the Scheduler

- Import/Export and the Scheduler

- Troubleshooting the Scheduler

- Examples of Using the Scheduler

- Scheduler Reference

- Part V Distributed Database Management

- 31 Distributed Database Concepts

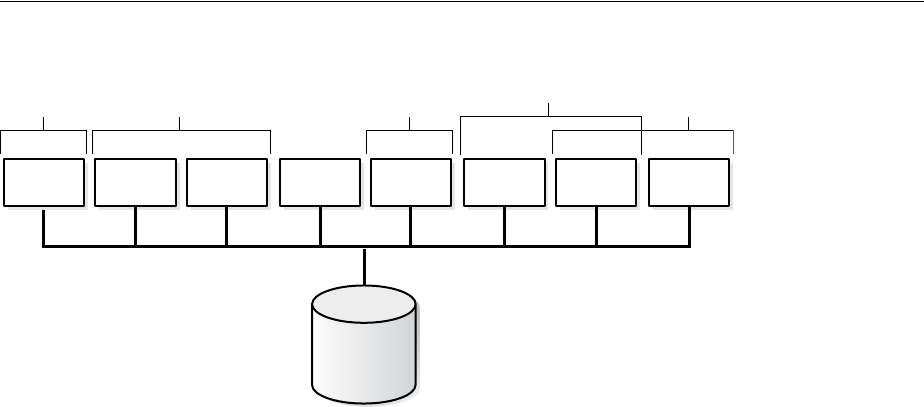

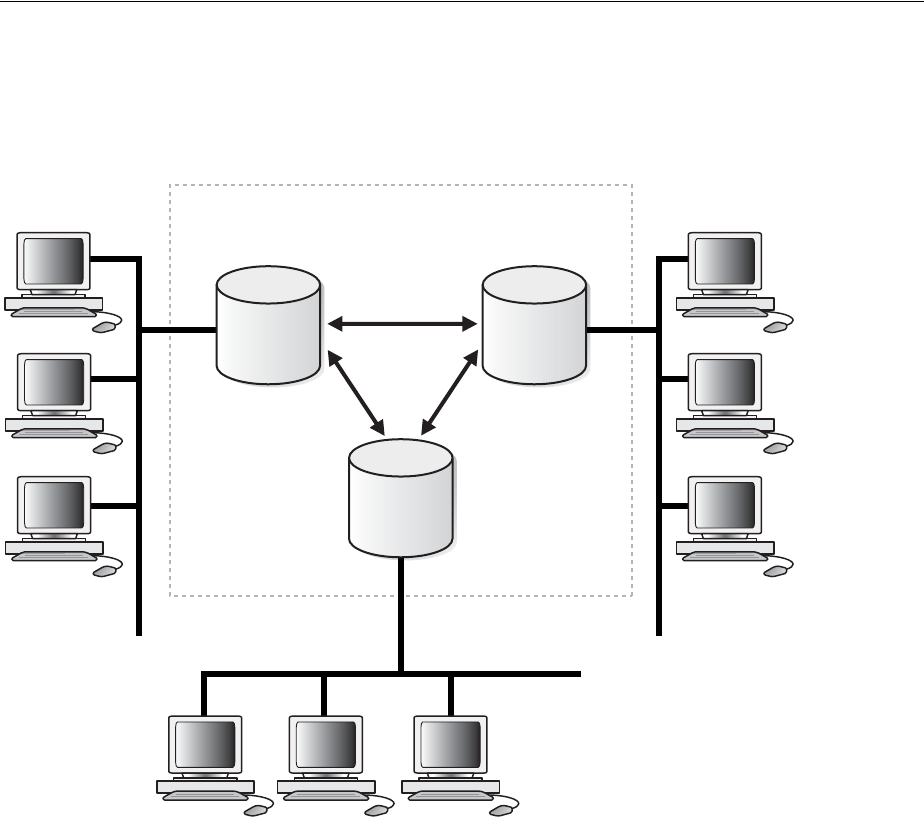

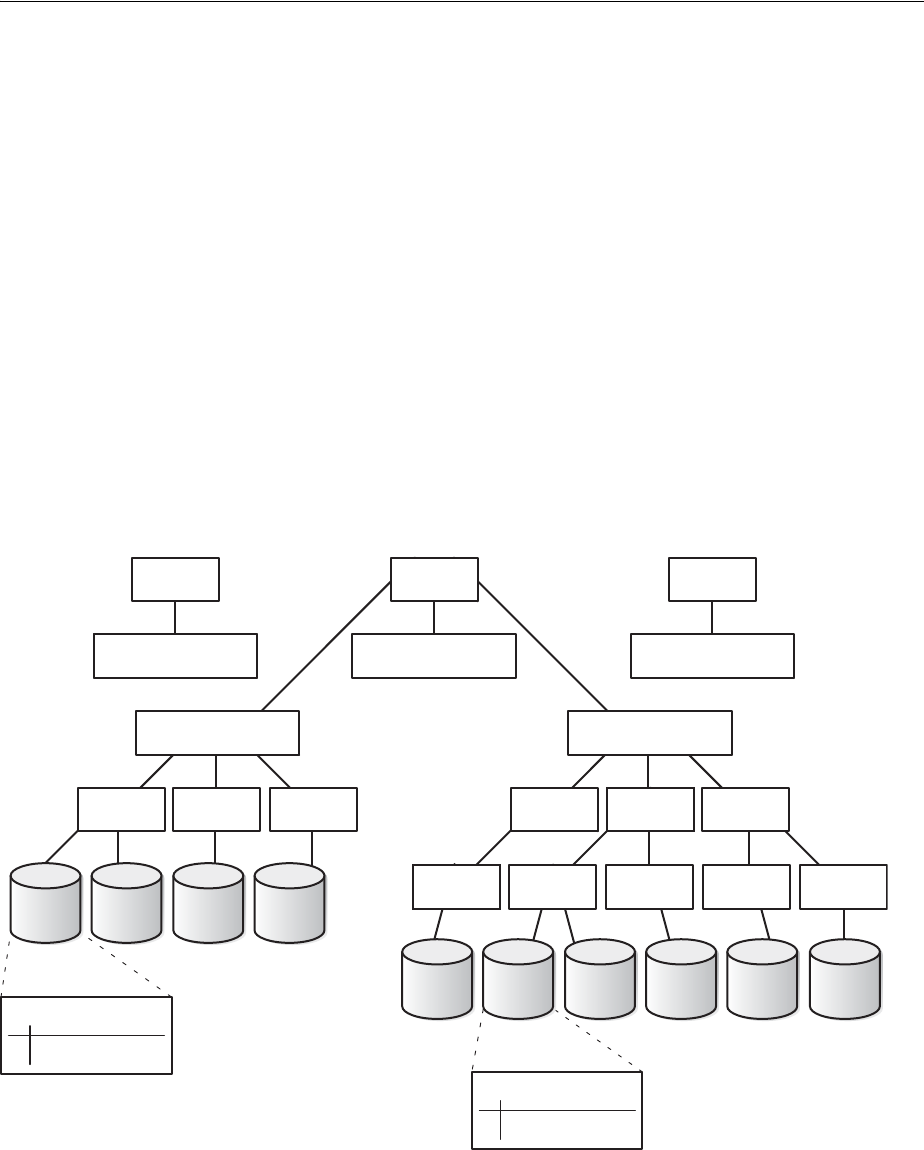

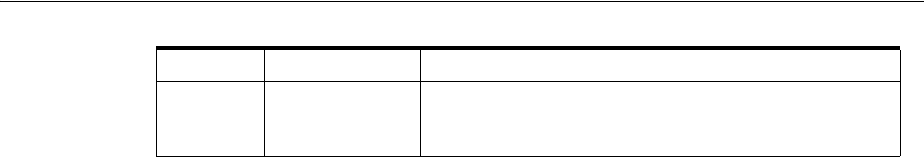

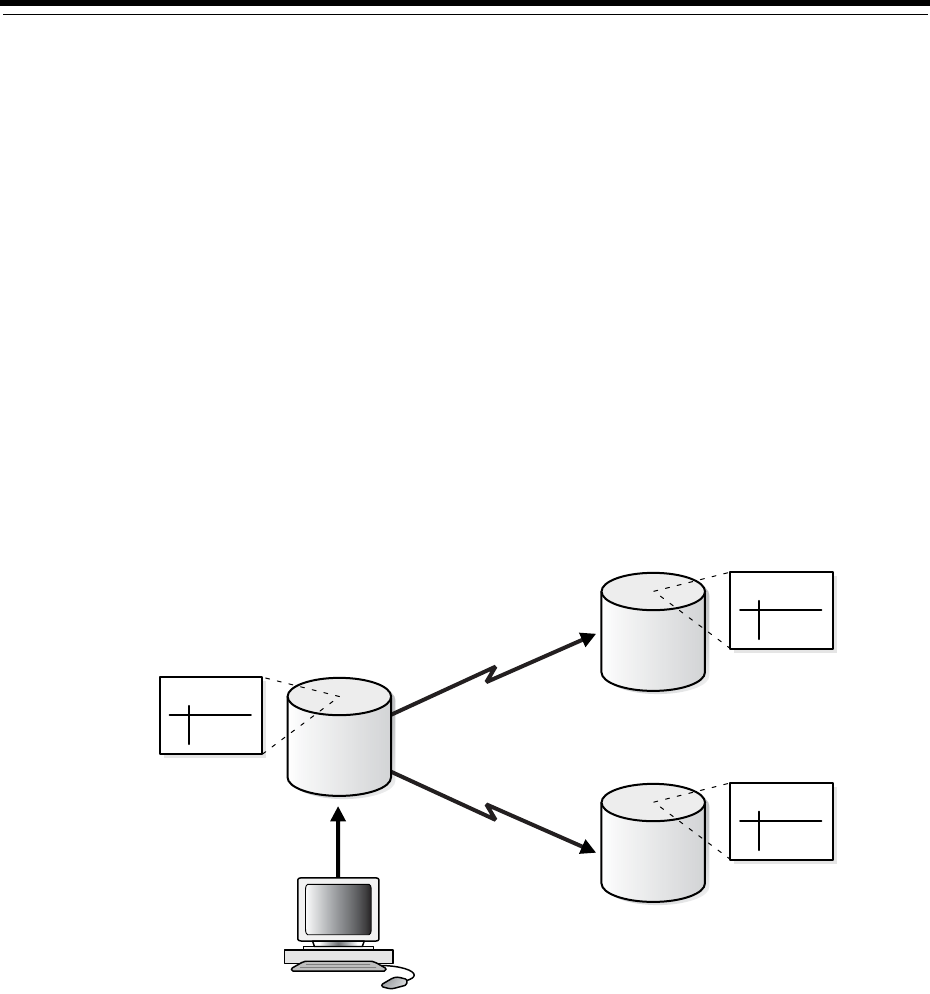

- Distributed Database Architecture

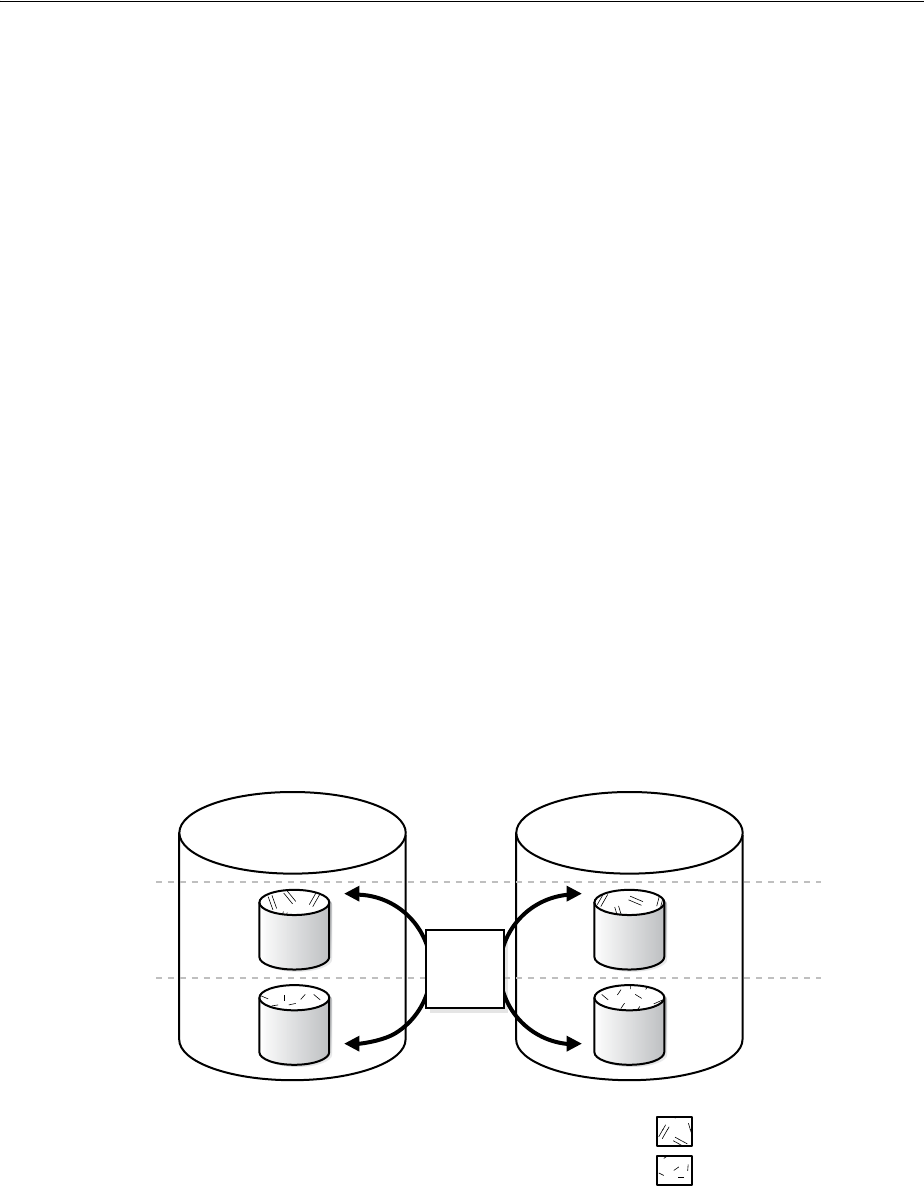

- Database Links

- Distributed Database Administration

- Transaction Processing in a Distributed System

- Distributed Database Application Development

- Character Set Support for Distributed Environments

- 32 Managing a Distributed Database

- Managing Global Names in a Distributed System

- Creating Database Links

- Using Shared Database Links

- Managing Database Links

- Viewing Information About Database Links

- Creating Location Transparency

- Managing Statement Transparency

- Managing a Distributed Database: Examples

- 33 Developing Applications for a Distributed Database System

- Managing the Distribution of Application Data

- Controlling Connections Established by Database Links

- Maintaining Referential Integrity in a Distributed System

- Tuning Distributed Queries

- Handling Errors in Remote Procedures

- 34 Distributed Transactions Concepts

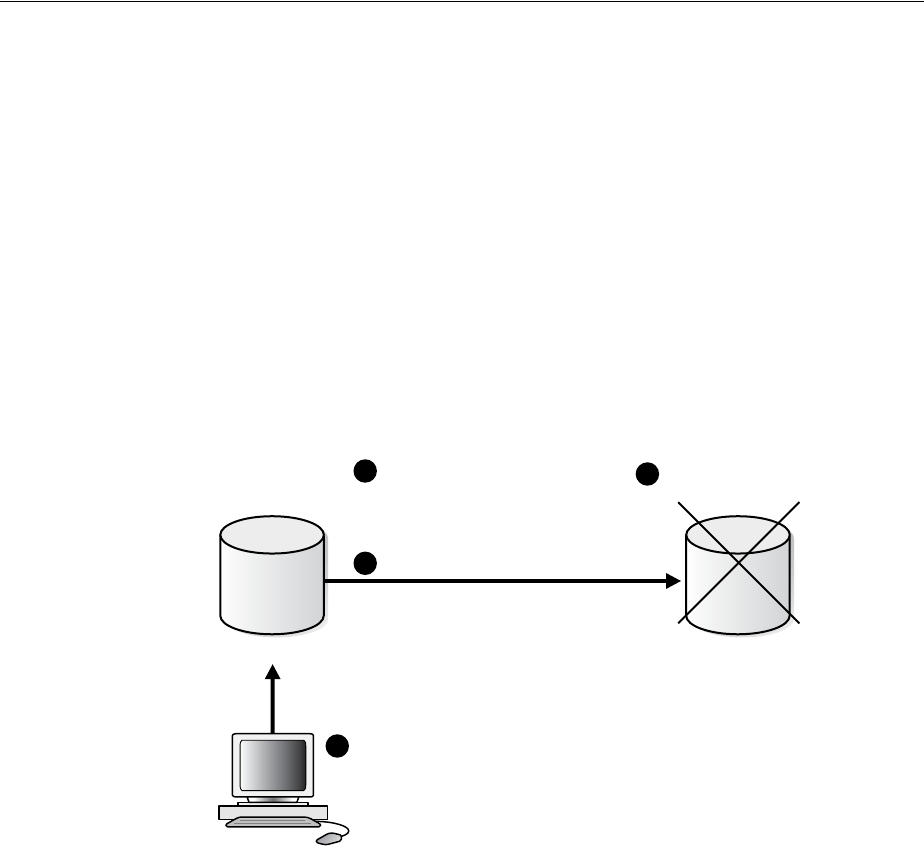

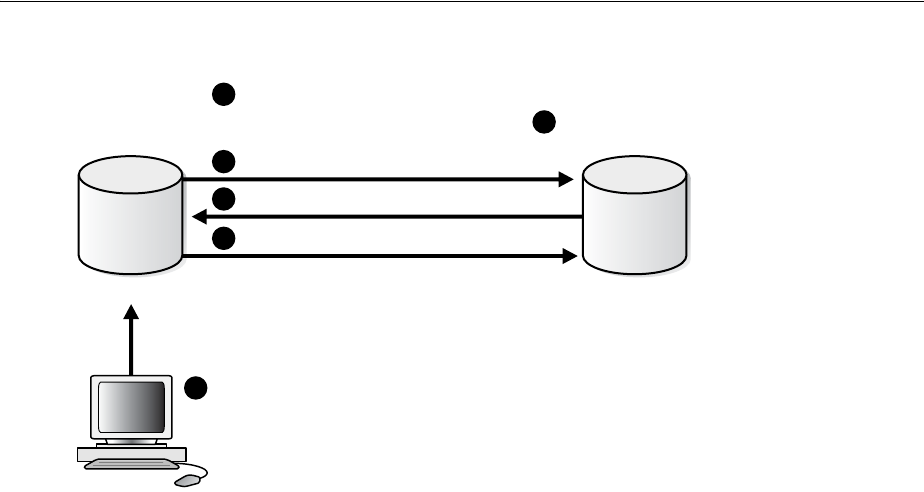

- What Are Distributed Transactions?

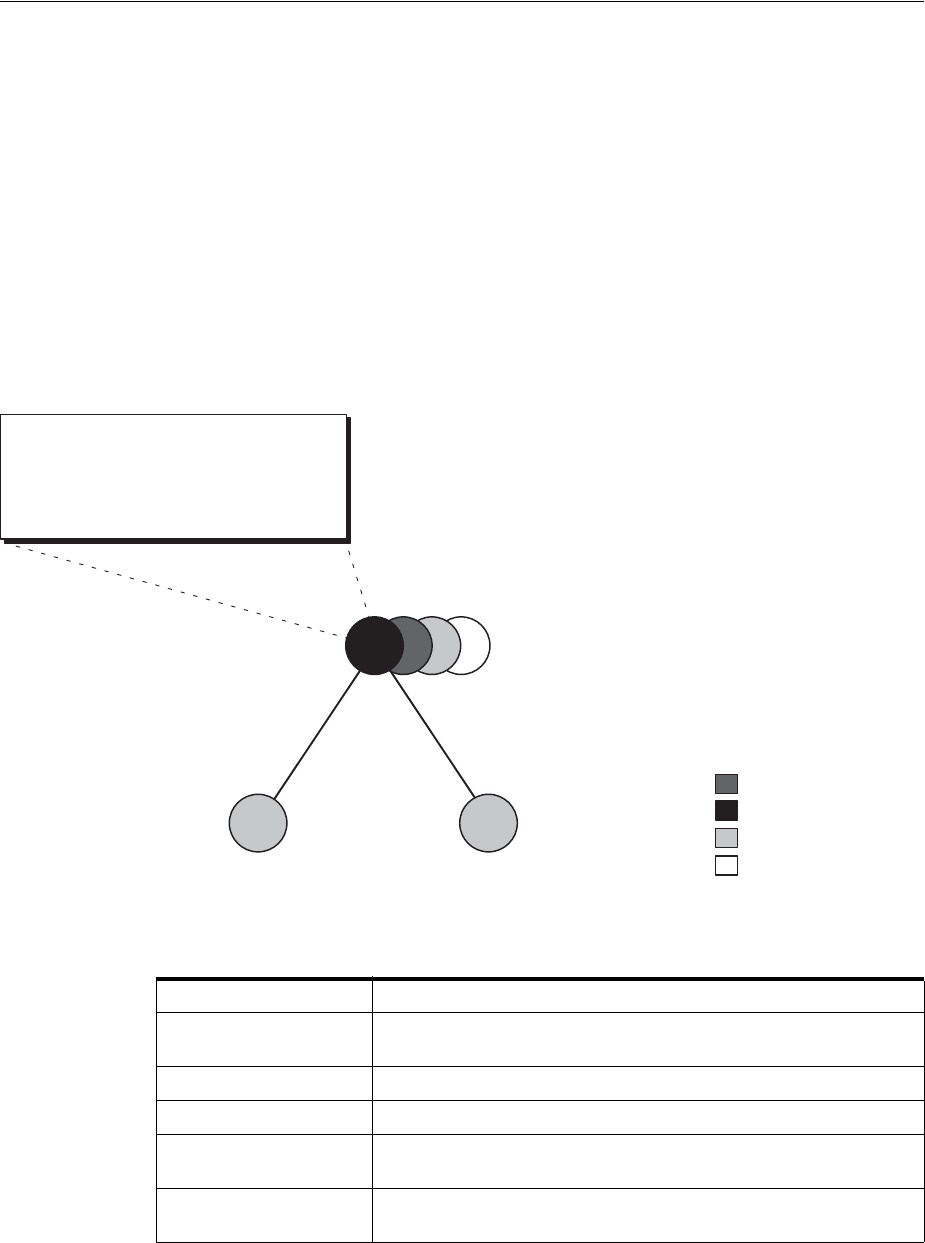

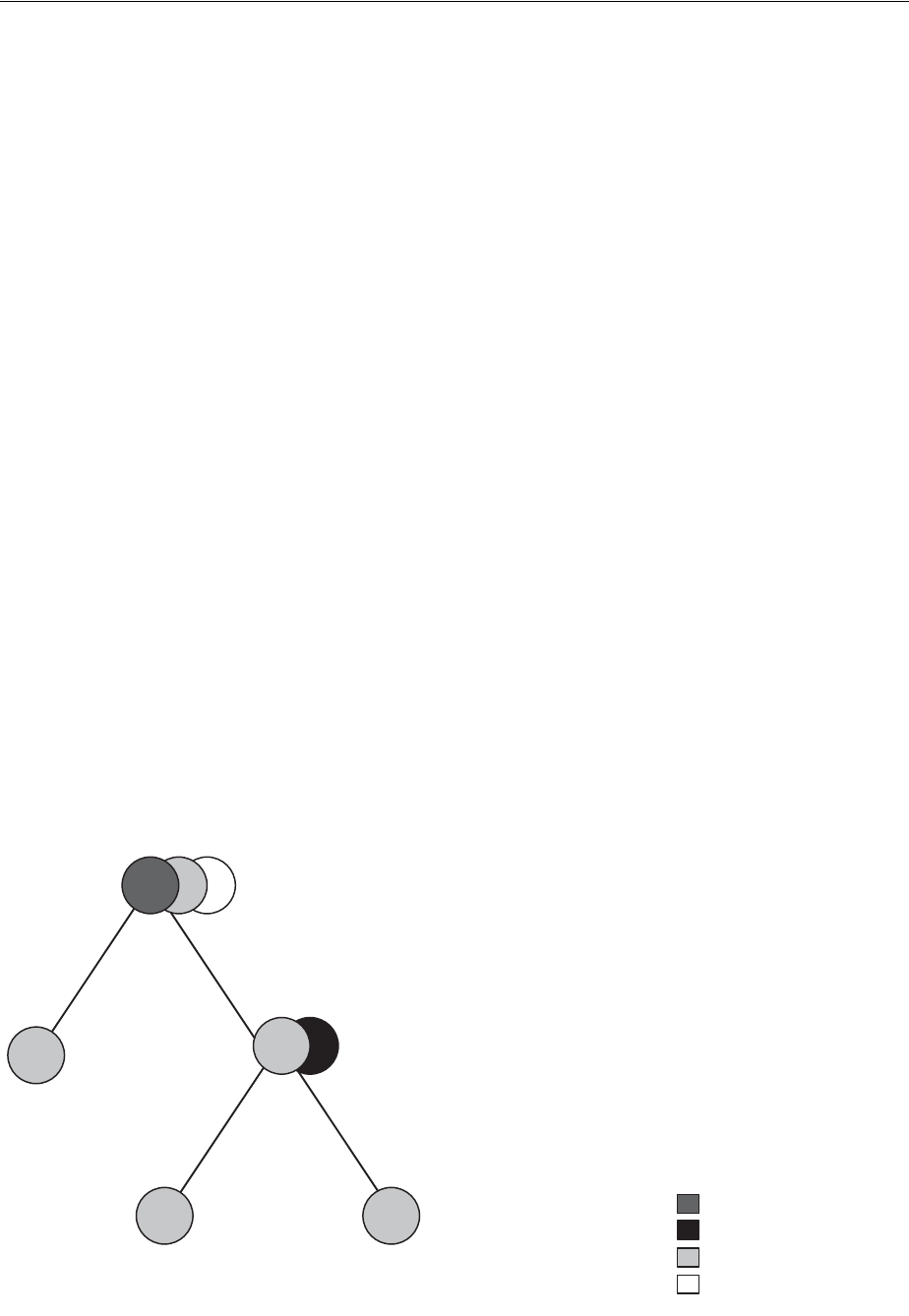

- Session Trees for Distributed Transactions

- Two-Phase Commit Mechanism

- In-Doubt Transactions

- Distributed Transaction Processing: Case Study

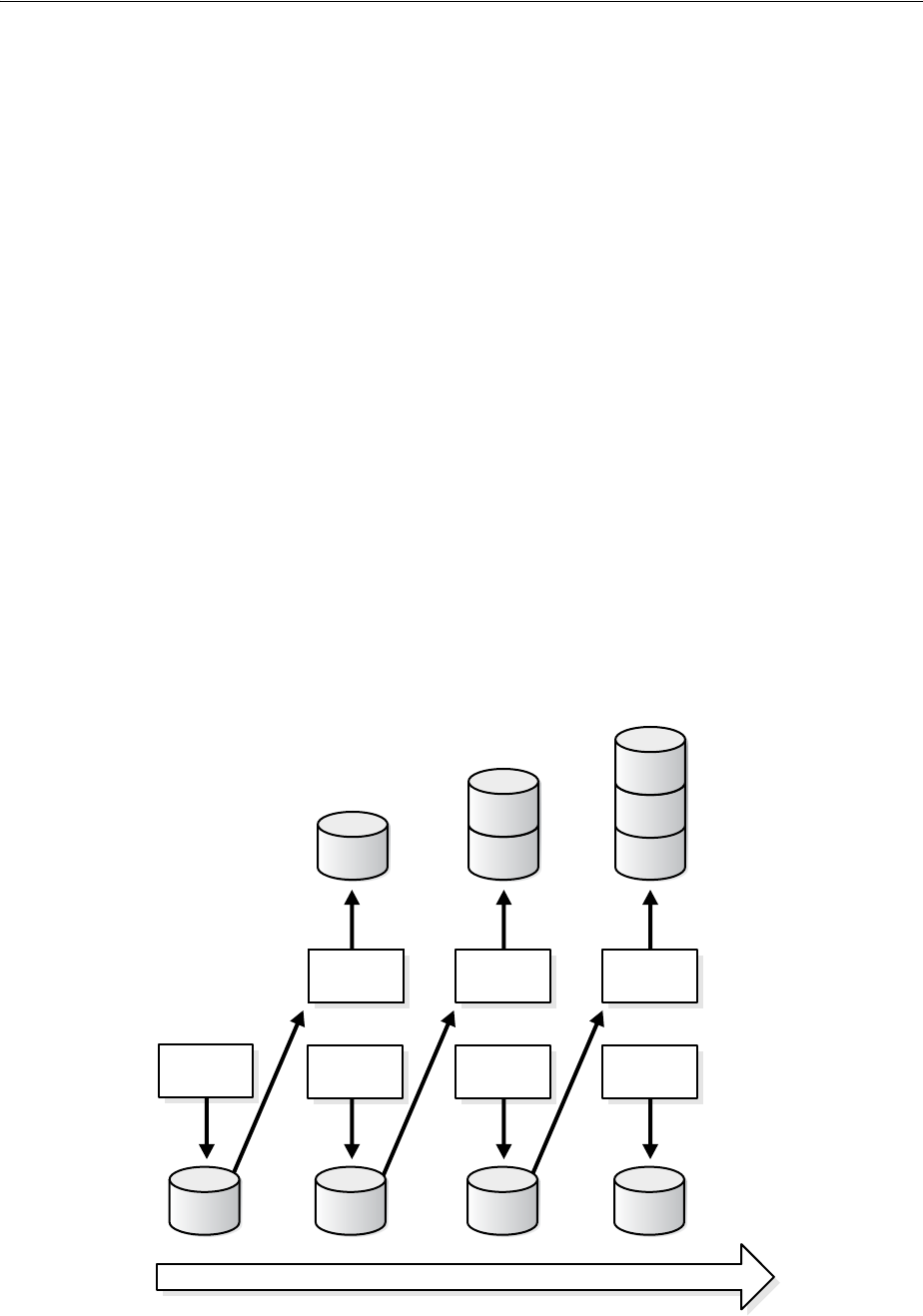

- Stage 1: Client Application Issues DML Statements

- Stage 2: Oracle Database Determines Commit Point Site

- Stage 3: Global Coordinator Sends Prepare Response

- Stage 4: Commit Point Site Commits

- Stage 5: Commit Point Site Informs Global Coordinator of Commit

- Stage 6: Global and Local Coordinators Tell All Nodes to Commit

- Stage 7: Global Coordinator and Commit Point Site Complete the Commit

- 35 Managing Distributed Transactions

- Specifying the Commit Point Strength of a Node

- Naming Transactions

- Viewing Information About Distributed Transactions

- Deciding How to Handle In-Doubt Transactions

- Manually Overriding In-Doubt Transactions

- Purging Pending Rows from the Data Dictionary

- Manually Committing an In-Doubt Transaction: Example

- Data Access Failures Due to Locks

- Simulating Distributed Transaction Failure

- Managing Read Consistency

- 31 Distributed Database Concepts

- Part VI Managing a Multitenant Environment

- 36 Overview of Managing a Multitenant Environment

- 37 Creating and Configuring a CDB

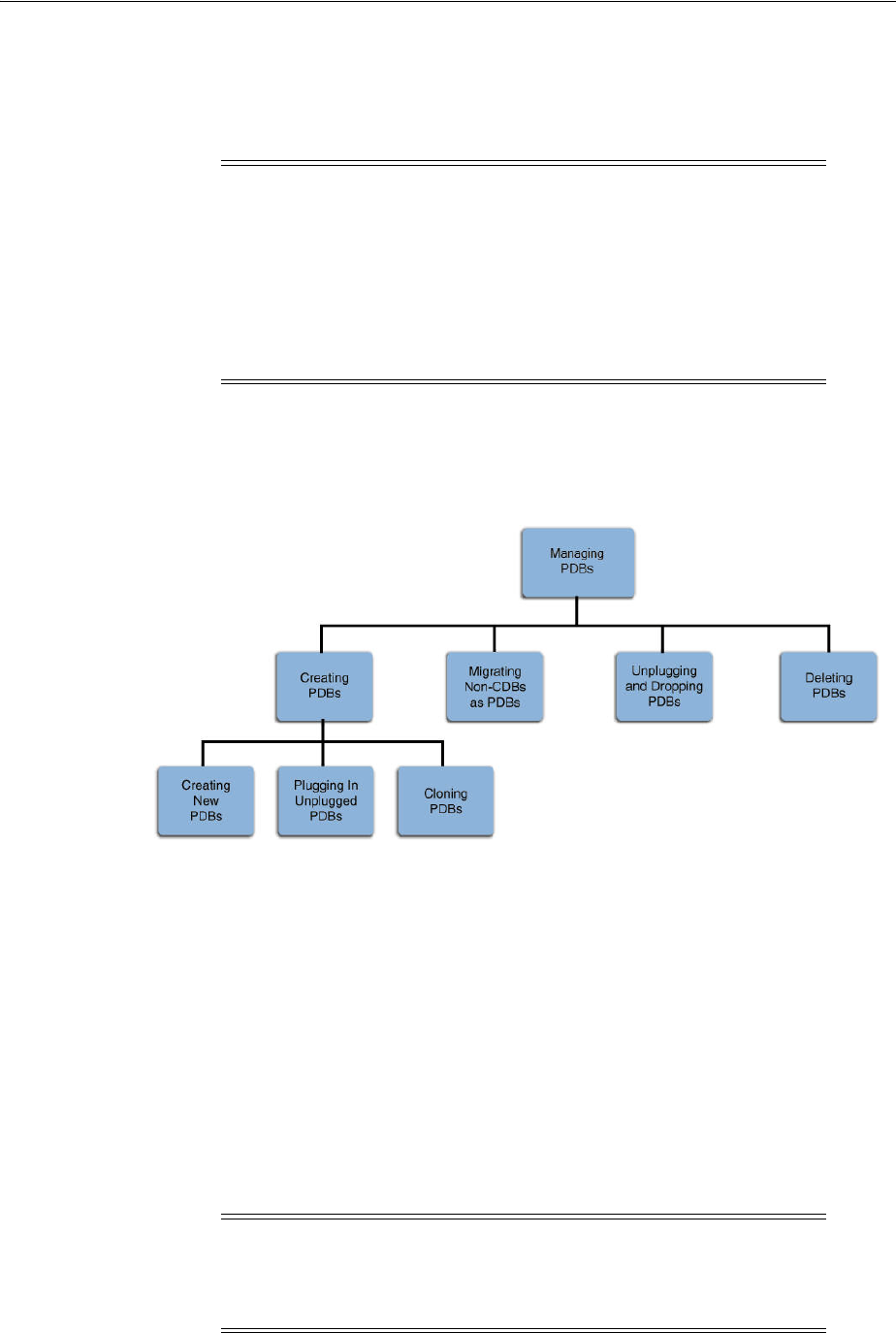

- 38 Creating and Removing PDBs with SQL*Plus

- About Creating and Removing PDBs

- Preparing for PDBs

- Creating a PDB Using the Seed

- Creating a PDB by Cloning an Existing PDB or Non-CDB

- Creating a PDB by Plugging an Unplugged PDB into a CDB

- Creating a PDB Using a Non-CDB

- Unplugging a PDB from a CDB

- Dropping a PDB

- 39 Creating and Removing PDBs with Cloud Control

- 40 Administering a CDB with SQL*Plus

- About Administering a CDB

- Accessing a Container in a CDB with SQL*Plus

- Executing Code in Containers Using the DBMS_SQL Package

- Modifying a CDB

- Using the ALTER SYSTEM SET Statement in a CDB

- Executing DDL Statements in a CDB

- Running Oracle-Supplied SQL Scripts in a CDB

- Shutting Down a CDB Instance

- 41 Administering CDBs and PDBs with Cloud Control

- 42 Administering PDBs with SQL*Plus

- 43 Viewing Information About CDBs and PDBs with SQL*Plus

- About CDB and PDB Information in Views

- Views for a CDB

- Determining Whether a Database Is a CDB

- Viewing Information About the Containers in a CDB

- Viewing Information About PDBs

- Viewing the Open Mode of Each PDB

- Querying Container Data Objects

- Querying User-Created Tables and Views Across All PDBs

- Determining the Current Container ID or Name

- Listing the Initialization Parameters That Are Modifiable in PDBs

- Viewing the History of PDBs

- 44 Using Oracle Resource Manager for PDBs with SQL*Plus

- About Using Oracle Resource Manager with CDBs and PDBs

- Prerequisites for Using Resource Manager with a CDB

- Creating a CDB Resource Plan

- Enabling and Disabling a CDB Resource Plan

- Creating a PDB Resource Plan

- Enabling and Disabling a PDB Resource Plan

- Maintaining Plans and Directives in a CDB

- Managing a CDB Resource Plan

- Updating a CDB Resource Plan

- Creating New CDB Resource Plan Directives for a PDB

- Updating CDB Resource Plan Directives for a PDB

- Deleting CDB Resource Plan Directives for a PDB

- Updating the Default Directive for PDBs in a CDB Resource Plan

- Updating the Default Directive for Maintenance Tasks in a CDB Resource Plan

- Deleting a CDB Resource Plan

- Modifying a PDB Resource Plan

- Managing a CDB Resource Plan

- Viewing Information About Plans and Directives in a CDB

- 45 Using Oracle Resource Manager for PDBs with Cloud Control

- 46 Using Oracle Scheduler with a CDB

- Part VII Appendixes

- Index

[1]

Oracle® Database

Administrator's Guide

12c Release 1 (12.1)

E41484-10

August 2014

Oracle Database Administrator's Guide, 12c Release 1 (12.1)

E41484-10

Copyright © 2001, 2014, Oracle and/or its affiliates. All rights reserved.

Primary Author: Randy Urbano

Contributor: The Oracle Database 12c documentation is dedicated to Mark Townsend, who was an

inspiration to all who worked on this release.

Contributing Author: Leo Cloutier, Steve Fogel, Pradeep Gopal, Janis Greenberg

Contributors: A. Agrawal, L. Ashdown, P. Avril, D. Austin, B. Baddepudi, H. Baer, C. Baird, R. Balwada, S.

Battula, M. Bauer, T. Bednar, E. Belden, J. Byun, D. Chatterjee, A. Chaudhry, B. Cheng, H. Chien, T. Chien, G.

Christman, C. C. Chui, G. Claborn, C. Colrain, K. Cook, J. Creighton, A. Dadhich, S. Datta, S. Davidson, M.

Dilman, J. Draaijer, M. Fallen, D. Gagne, A. Ganesh, GP Gongloor, F. Gonzales, J. Gonzalez, V. Goorah, S.

Gopalan, S. Gupta, B. Habeck, S. Hase, M. Hayasaka, W. Hodak, W. Hu, P. Huey, C. Iyer, K. Itikarlapalli, S.

Jain, K. Jernigan, S. Joshi, B. Khaladkar, B. Krishnan, V. Krishnaswamy, A. Kruglikov, B. Kuchibhotla, S.

Kumar, V. Kumar, H. Lakshmanan, P. Lane, A. Lee, B. Lee, J. Lee, S. K. Lee, C. Lei, B. Leung, Y. Li, I.

Listvinsky, B. Llewellyn, B. Lundhild, S. Lynn, R. Mani, V. Marwah, C. McGregor, B. McGuirk, K. Mensah, E.

Miner, M. Minhas, K. Mohan, P. Murguia, A. Mylavarapu, V. Moore, N. Mukherjee, S. Muthulingam, G.

Ngai, L. Nim, S. Panchumarthy, R. Pang, V. Panteleenko, R. Pfau, R. Pingte, K. Rajamani, A. Raghavan, M.

Ramacher, R. Ramkissoon, S. Ravindhran, A. Ray, W. Ren, K. Rich, S. Sonawane, Y. Sarig, M. Savanur, S.

Shankar, D. Sharma, A. Shen, B. Sinha, J. Spiller, V. Srihari, D. Steiner, J. Stern, M. Stewart, R. Swonger, M.

Subramaniam, M. Susairaj, A. Tran, A. Tsukerman, T. Ueda, K. Umamageswaran, D. Utzig, E. Voss, X. Wang,

M. Wei, S. Wertheimer, P. Wheeler, D. Williams, A. Witkowski, D. M. Wong, Z. Yang, P. Youn, T. F. Yu, W.

Zhang

This software and related documentation are provided under a license agreement containing restrictions on

use and disclosure and are protected by intellectual property laws. Except as expressly permitted in your

license agreement or allowed by law, you may not use, copy, reproduce, translate, broadcast, modify, license,

transmit, distribute, exhibit, perform, publish, or display any part, in any form, or by any means. Reverse

engineering, disassembly, or decompilation of this software, unless required by law for interoperability, is

prohibited.

The information contained herein is subject to change without notice and is not warranted to be error-free. If

you find any errors, please report them to us in writing.

If this is software or related documentation that is delivered to the U.S. Government or anyone licensing it

on behalf of the U.S. Government, the following notice is applicable:

U.S. GOVERNMENT END USERS: Oracle programs, including any operating system, integrated software,

any programs installed on the hardware, and/or documentation, delivered to U.S. Government end users

are "commercial computer software" pursuant to the applicable Federal Acquisition Regulation and

agency-specific supplemental regulations. As such, use, duplication, disclosure, modification, and

adaptation of the programs, including any operating system, integrated software, any programs installed on

the hardware, and/or documentation, shall be subject to license terms and license restrictions applicable to

the programs. No other rights are granted to the U.S. Government.

This software or hardware is developed for general use in a variety of information management

applications. It is not developed or intended for use in any inherently dangerous applications, including

applications that may create a risk of personal injury. If you use this software or hardware in dangerous

applications, then you shall be responsible to take all appropriate fail-safe, backup, redundancy, and other

measures to ensure its safe use. Oracle Corporation and its affiliates disclaim any liability for any damages

caused by use of this software or hardware in dangerous applications.

Oracle and Java are registered trademarks of Oracle and/or its affiliates. Other names may be trademarks of

their respective owners.

Intel and Intel Xeon are trademarks or registered trademarks of Intel Corporation. All SPARC trademarks

are used under license and are trademarks or registered trademarks of SPARC International, Inc. AMD,

Opteron, the AMD logo, and the AMD Opteron logo are trademarks or registered trademarks of Advanced

Micro Devices. UNIX is a registered trademark of The Open Group.

This software or hardware and documentation may provide access to or information on content, products,

and services from third parties. Oracle Corporation and its affiliates are not responsible for and expressly

disclaim all warranties of any kind with respect to third-party content, products, and services. Oracle

Corporation and its affiliates will not be responsible for any loss, costs, or damages incurred due to your

access to or use of third-party content, products, or services.

iii

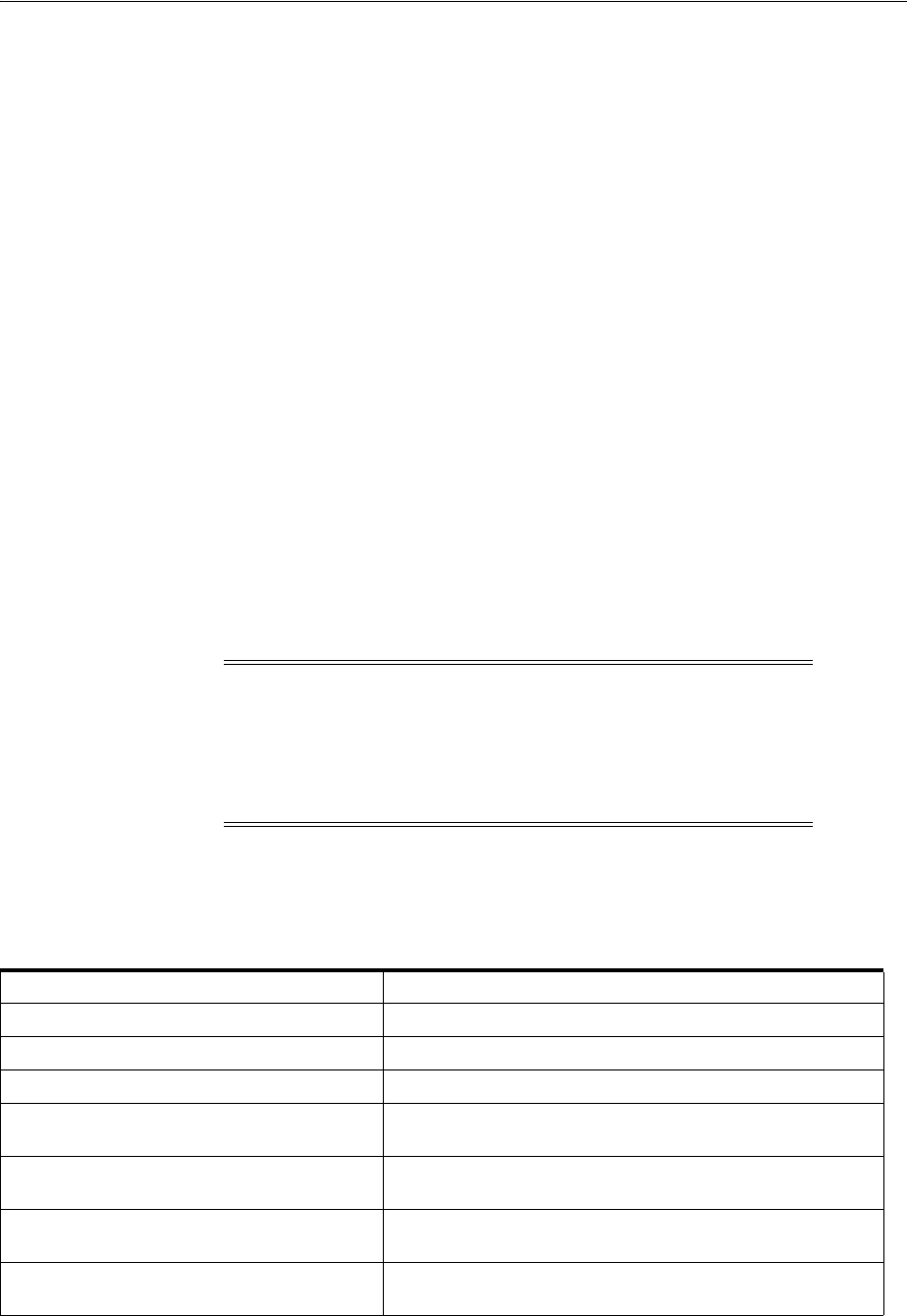

Contents

Preface................................................................................................................................................................ xli

Audience...................................................................................................................................................... xli

Documentation Accessibility.................................................................................................................... xli

Related Documents ................................................................................................................................... xlii

Conventions ............................................................................................................................................... xlii

Changes in This Release for Oracle Database Administrator's Guide..................... xliii

Changes in Oracle Database 12c Release 1 (12.1.0.2)........................................................................... xliii

Changes in Oracle Database 12c Release 1 (12.1.0.1)............................................................................ xlv

Part I Basic Database Administration

1 Getting Started with Database Administration

Types of Oracle Database Users............................................................................................................ 1-1

Database Administrators .................................................................................................................. 1-1

Security Officers ................................................................................................................................. 1-2

Network Administrators................................................................................................................... 1-2

Application Developers..................................................................................................................... 1-2

Application Administrators.............................................................................................................. 1-2

Database Users ................................................................................................................................... 1-3

Tasks of a Database Administrator....................................................................................................... 1-3

Task 1: Evaluate the Database Server Hardware .......................................................................... 1-3

Task 2: Install the Oracle Database Software ................................................................................. 1-3

Task 3: Plan the Database.................................................................................................................. 1-4

Task 4: Create and Open the Database ........................................................................................... 1-4

Task 5: Back Up the Database........................................................................................................... 1-5

Task 6: Enroll System Users.............................................................................................................. 1-5

Task 7: Implement the Database Design......................................................................................... 1-5

Task 8: Back Up the Fully Functional Database ............................................................................ 1-5

Task 9: Tune Database Performance ............................................................................................... 1-5

Task 10: Download and Install Patches .......................................................................................... 1-5

Task 11: Roll Out to Additional Hosts ............................................................................................ 1-6

Submitting Commands and SQL to the Database............................................................................. 1-6

About SQL*Plus.................................................................................................................................. 1-7

Connecting to the Database with SQL*Plus................................................................................... 1-7

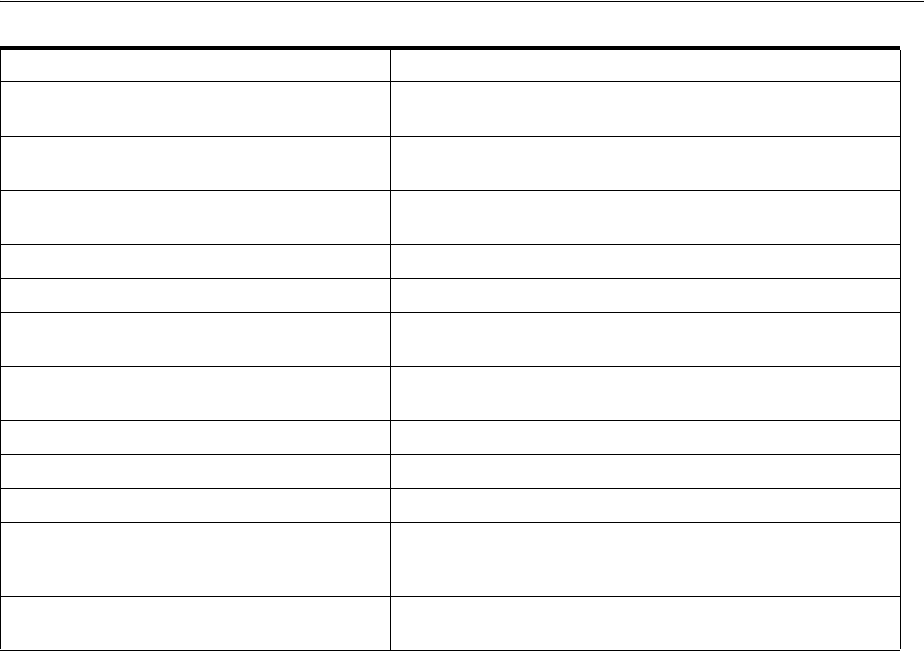

iv

Identifying Your Oracle Database Software Release..................................................................... 1-13

Release Number Format................................................................................................................. 1-13

Checking Your Current Release Number.................................................................................... 1-13

About Database Administrator Security and Privileges ............................................................... 1-14

The Database Administrator's Operating System Account...................................................... 1-14

Administrative User Accounts...................................................................................................... 1-14

Database Administrator Authentication .......................................................................................... 1-17

Administrative Privileges .............................................................................................................. 1-17

Selecting an Authentication Method for Database Administrators ........................................ 1-19

Using Operating System Authentication..................................................................................... 1-21

Using Password File Authentication............................................................................................ 1-23

Creating and Maintaining a Database Password File .................................................................... 1-25

Creating a Database Password File with ORAPWD.................................................................. 1-25

Sharing and Disabling the Database Password File................................................................... 1-30

Adding Users to a Database Password File ................................................................................ 1-32

Maintaining a Database Password File........................................................................................ 1-34

Data Utilities .......................................................................................................................................... 1-34

2 Creating and Configuring an Oracle Database

About Creating an Oracle Database ..................................................................................................... 2-1

Considerations Before Creating the Database ............................................................................... 2-2

Creating a Database with DBCA........................................................................................................... 2-5

Creating a Database with Interactive DBCA.................................................................................. 2-5

Creating a Database with Noninteractive/Silent DBCA.............................................................. 2-5

Creating a Database with the CREATE DATABASE Statement .................................................... 2-6

Step 1: Specify an Instance Identifier (SID) .................................................................................... 2-7

Step 2: Ensure That the Required Environment Variables Are Set............................................. 2-7

Step 3: Choose a Database Administrator Authentication Method............................................ 2-8

Step 4: Create the Initialization Parameter File.............................................................................. 2-8

Step 5: (Windows Only) Create an Instance................................................................................... 2-9

Step 6: Connect to the Instance......................................................................................................... 2-9

Step 7: Create a Server Parameter File ......................................................................................... 2-10

Step 8: Start the Instance ............................................................................................................... 2-11

Step 9: Issue the CREATE DATABASE Statement..................................................................... 2-11

Step 10: Create Additional Tablespaces....................................................................................... 2-15

Step 11: Run Scripts to Build Data Dictionary Views ............................................................... 2-15

Step 12: (Optional) Run Scripts to Install Additional Options ................................................. 2-16

Step 13: Back Up the Database. ..................................................................................................... 2-16

Step 14: (Optional) Enable Automatic Instance Startup............................................................ 2-16

Specifying CREATE DATABASE Statement Clauses ................................................................... 2-16

Protecting Your Database: Specifying Passwords for Users SYS and SYSTEM .................... 2-17

Creating a Locally Managed SYSTEM Tablespace..................................................................... 2-17

About the SYSAUX Tablespace..................................................................................................... 2-18

Using Automatic Undo Management: Creating an Undo Tablespace.................................... 2-19

Creating a Default Permanent Tablespace .................................................................................. 2-19

Creating a Default Temporary Tablespace.................................................................................. 2-19

Specifying Oracle Managed Files at Database Creation............................................................ 2-20

v

Supporting Bigfile Tablespaces During Database Creation...................................................... 2-21

Specifying the Database Time Zone and Time Zone File.......................................................... 2-22

Specifying FORCE LOGGING Mode........................................................................................... 2-23

Specifying Initialization Parameters................................................................................................. 2-24

About Initialization Parameters and Initialization Parameter Files........................................ 2-25

Determining the Global Database Name..................................................................................... 2-27

Specifying a Fast Recovery Area................................................................................................... 2-28

Specifying Control Files ................................................................................................................ 2-28

Specifying Database Block Sizes ................................................................................................... 2-29

Specifying the Maximum Number of Processes......................................................................... 2-30

Specifying the DDL Lock Timeout ............................................................................................... 2-30

Specifying the Method of Undo Space Management ................................................................ 2-31

About The COMPATIBLE Initialization Parameter .................................................................. 2-32

Setting the License Parameter ....................................................................................................... 2-32

Managing Initialization Parameters Using a Server Parameter File........................................... 2-33

What Is a Server Parameter File?.................................................................................................. 2-33

Migrating to a Server Parameter File ........................................................................................... 2-34

Creating a Server Parameter File .................................................................................................. 2-34

The SPFILE Initialization Parameter ............................................................................................ 2-36

Changing Initialization Parameter Values .................................................................................. 2-36

Clearing Initialization Parameter Values..................................................................................... 2-38

Exporting the Server Parameter File ............................................................................................ 2-38

Backing Up the Server Parameter File ......................................................................................... 2-38

Recovering a Lost or Damaged Server Parameter File.............................................................. 2-39

Viewing Parameter Settings .......................................................................................................... 2-39

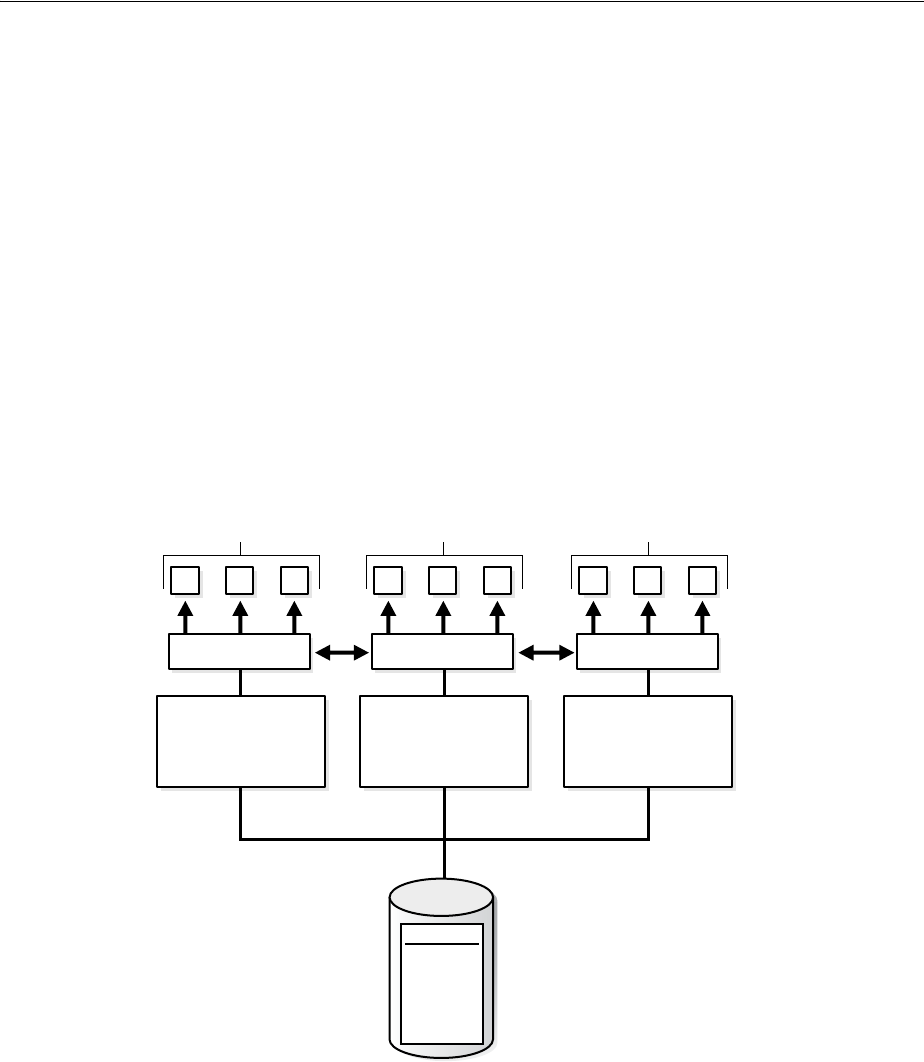

Managing Application Workloads with Database Services ........................................................ 2-40

Database Services............................................................................................................................ 2-40

Global Data Services....................................................................................................................... 2-43

Database Service Data Dictionary Views..................................................................................... 2-44

Considerations After Creating a Database....................................................................................... 2-45

Some Security Considerations....................................................................................................... 2-45

Enabling Transparent Data Encryption ....................................................................................... 2-46

Creating a Secure External Password Store ................................................................................ 2-46

Using Transaction Guard and Application Continuity............................................................. 2-47

Installing the Oracle Database Sample Schemas........................................................................ 2-47

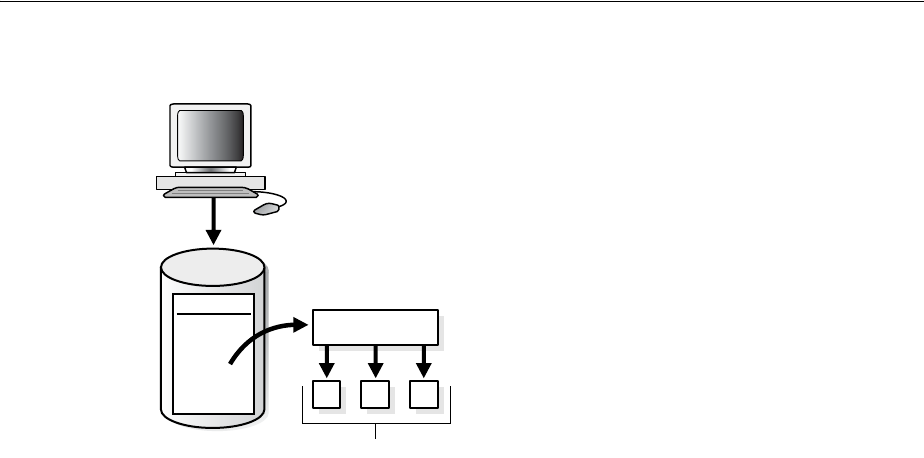

Cloning a Database with CloneDB.................................................................................................... 2-47

About Cloning a Database with CloneDB................................................................................... 2-48

Cloning a Database with CloneDB............................................................................................... 2-48

After Cloning a Database with CloneDB..................................................................................... 2-53

Dropping a Database............................................................................................................................ 2-54

Database Data Dictionary Views ....................................................................................................... 2-54

Database Configuration Assistant Command Reference for Silent Mode................................ 2-55

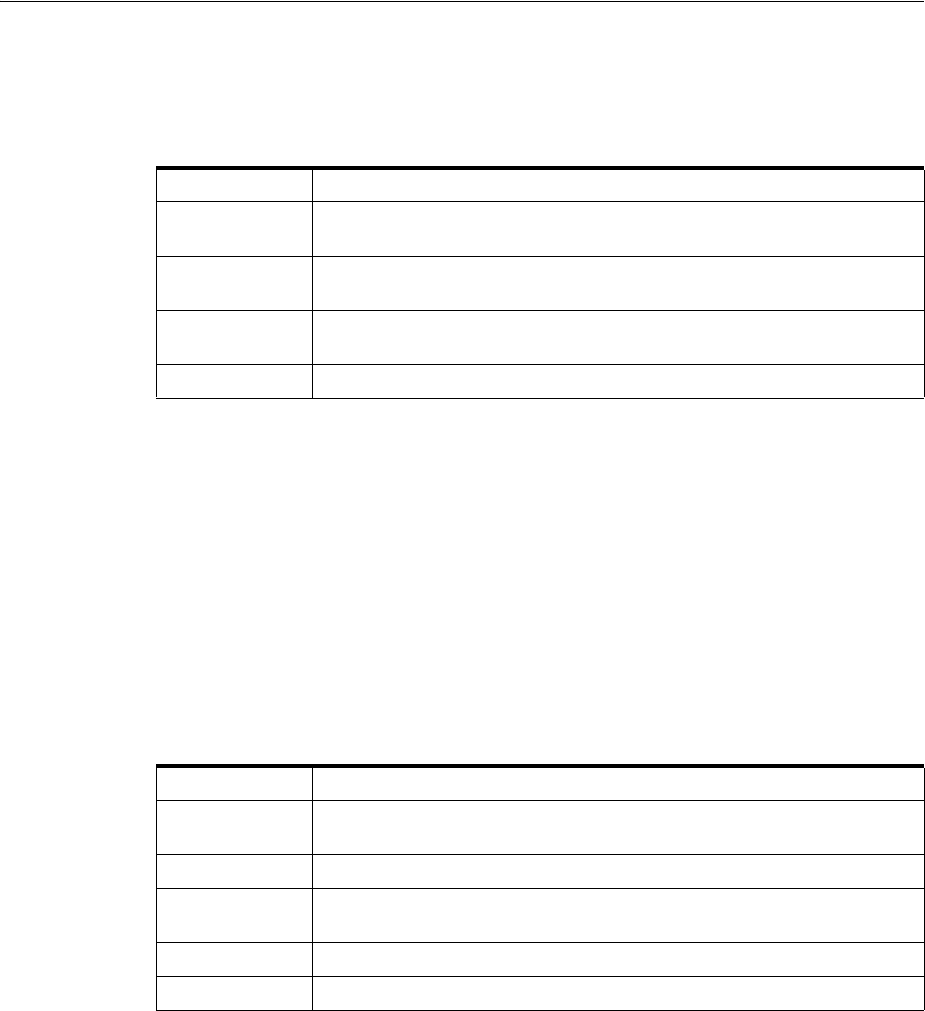

createDatabase................................................................................................................................. 2-57

configureDatabase........................................................................................................................... 2-62

createTemplateFromDB ................................................................................................................. 2-64

createCloneTemplate...................................................................................................................... 2-65

generateScripts ................................................................................................................................ 2-66

vi

deleteDatabase................................................................................................................................. 2-67

createPluggableDatabase ............................................................................................................... 2-68

unplugDatabase............................................................................................................................... 2-71

deletePluggableDatabase............................................................................................................... 2-72

configurePluggableDatabase......................................................................................................... 2-73

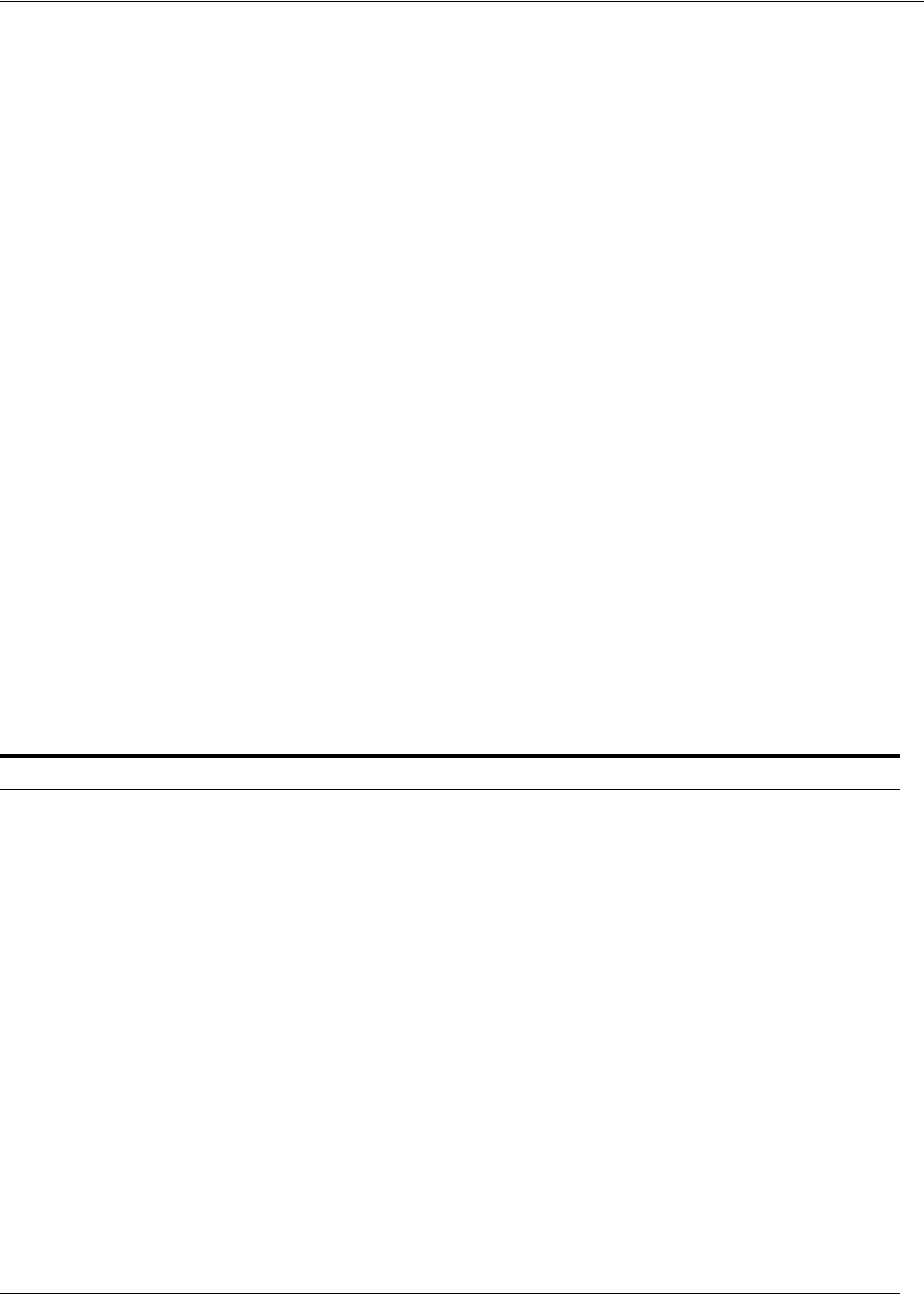

3 Starting Up and Shutting Down

Starting Up a Database............................................................................................................................ 3-1

About Database Startup Options..................................................................................................... 3-1

Specifying Initialization Parameters at Startup............................................................................. 3-2

About Automatic Startup of Database Services ............................................................................ 3-4

Preparing to Start Up an Instance.................................................................................................... 3-5

Starting Up an Instance ..................................................................................................................... 3-6

Altering Database Availability.............................................................................................................. 3-9

Mounting a Database to an Instance ............................................................................................... 3-9

Opening a Closed Database........................................................................................................... 3-10

Opening a Database in Read-Only Mode.................................................................................... 3-10

Restricting Access to an Open Database...................................................................................... 3-11

Shutting Down a Database.................................................................................................................. 3-11

Shutting Down with the Normal Mode....................................................................................... 3-12

Shutting Down with the Immediate Mode ................................................................................. 3-12

Shutting Down with the Transactional Mode............................................................................. 3-13

Shutting Down with the Abort Mode .......................................................................................... 3-13

Shutdown Timeout ......................................................................................................................... 3-14

Quiescing a Database ........................................................................................................................... 3-14

Placing a Database into a Quiesced State .................................................................................... 3-15

Restoring the System to Normal Operation................................................................................ 3-16

Viewing the Quiesce State of an Instance.................................................................................... 3-16

Suspending and Resuming a Database ............................................................................................ 3-16

4 Configuring Automatic Restart of an Oracle Database

About Oracle Restart ............................................................................................................................... 4-1

Oracle Restart Overview................................................................................................................... 4-2

About Startup Dependencies ........................................................................................................... 4-2

About Starting and Stopping Components with Oracle Restart................................................. 4-3

About Starting and Stopping Oracle Restart.................................................................................. 4-3

Oracle Restart Configuration............................................................................................................ 4-4

Oracle Restart Integration with Oracle Data Guard ..................................................................... 4-5

Fast Application Notification with Oracle Restart........................................................................ 4-6

Configuring Oracle Restart .................................................................................................................... 4-9

Preparing to Run SRVCTL............................................................................................................. 4-10

Obtaining Help for SRVCTL.......................................................................................................... 4-11

Adding Components to the Oracle Restart Configuration ....................................................... 4-12

Removing Components from the Oracle Restart Configuration ............................................. 4-13

Disabling and Enabling Oracle Restart Management for a Component ................................ 4-14

Viewing Component Status........................................................................................................... 4-15

Viewing the Oracle Restart Configuration for a Component................................................... 4-15

vii

Modifying the Oracle Restart Configuration for a Component............................................... 4-16

Managing Environment Variables in the Oracle Restart Configuration ................................ 4-16

Creating and Deleting Database Services with SRVCTL .......................................................... 4-18

Enabling FAN Events in an Oracle Restart Environment......................................................... 4-19

Automating the Failover of Connections Between Primary and Standby Databases........... 4-20

Enabling Clients for Fast Connection Failover ........................................................................... 4-20

Starting and Stopping Components Managed by Oracle Restart ............................................... 4-24

Stopping and Restarting Oracle Restart for Maintenance Operations....................................... 4-26

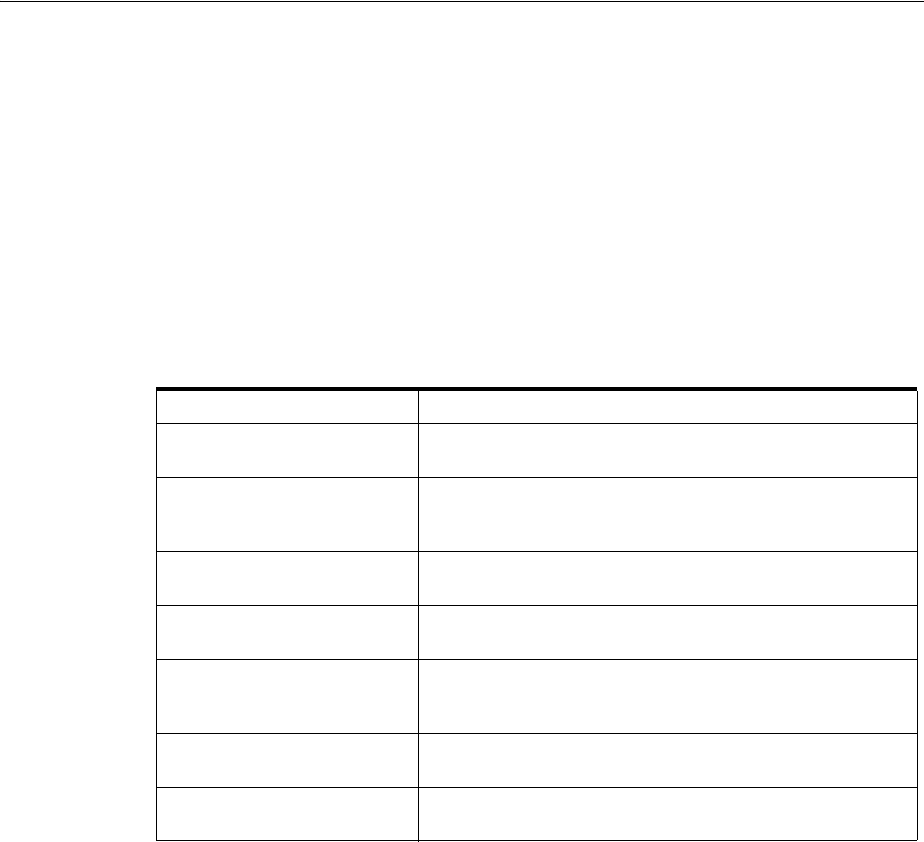

SRVCTL Command Reference for Oracle Restart.......................................................................... 4-29

add..................................................................................................................................................... 4-31

config................................................................................................................................................. 4-40

disable............................................................................................................................................... 4-44

downgrade ....................................................................................................................................... 4-47

enable ................................................................................................................................................ 4-48

getenv................................................................................................................................................ 4-51

modify............................................................................................................................................... 4-53

remove .............................................................................................................................................. 4-60

setenv ................................................................................................................................................ 4-63

start.................................................................................................................................................... 4-66

status ................................................................................................................................................. 4-70

stop.................................................................................................................................................... 4-74

unsetenv............................................................................................................................................ 4-79

update ............................................................................................................................................... 4-81

upgrade............................................................................................................................................. 4-82

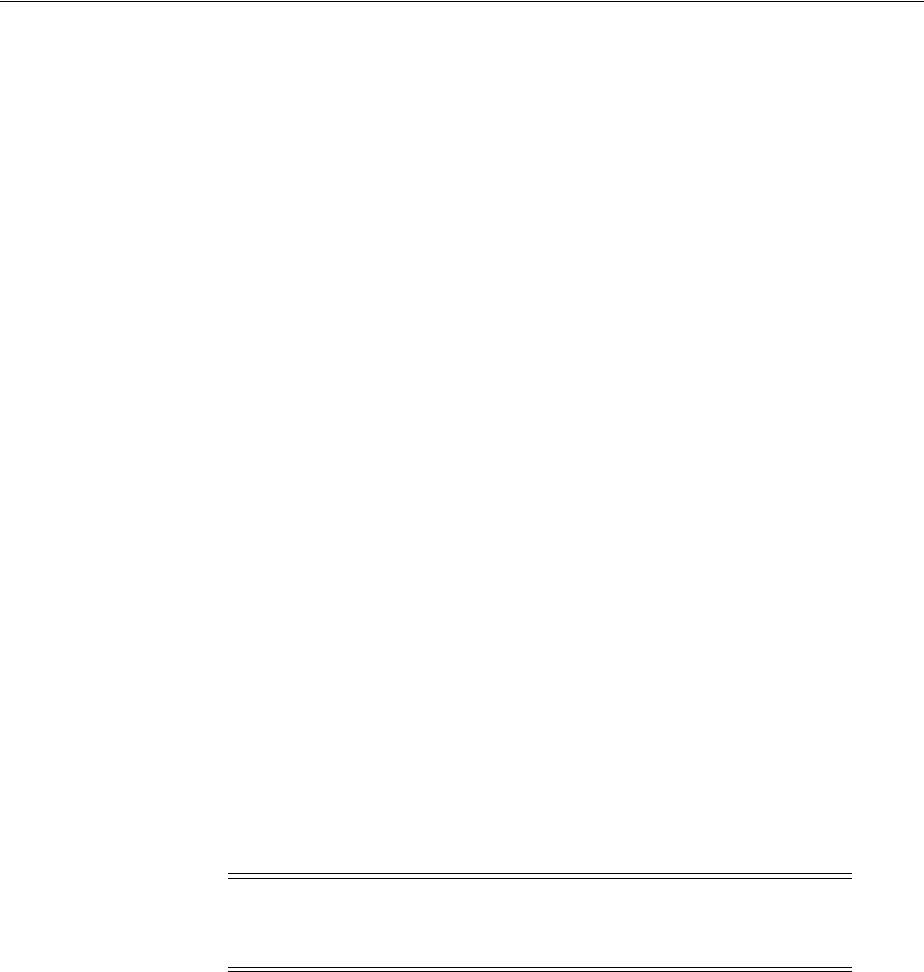

CRSCTL Command Reference ........................................................................................................... 4-83

check.................................................................................................................................................. 4-84

config................................................................................................................................................. 4-85

disable............................................................................................................................................... 4-86

enable ................................................................................................................................................ 4-87

start.................................................................................................................................................... 4-88

stop.................................................................................................................................................... 4-89

5 Managing Processes

About Dedicated and Shared Server Processes.................................................................................. 5-1

Dedicated Server Processes .............................................................................................................. 5-1

Shared Server Processes.................................................................................................................... 5-2

About Database Resident Connection Pooling.................................................................................. 5-4

Comparing DRCP to Dedicated Server and Shared Server......................................................... 5-5

Configuring Oracle Database for Shared Server ............................................................................... 5-6

Initialization Parameters for Shared Server ................................................................................... 5-6

Memory Management for Shared Server ....................................................................................... 5-7

Enabling Shared Server..................................................................................................................... 5-7

Configuring Dispatchers................................................................................................................... 5-9

Disabling Shared Server................................................................................................................. 5-14

Shared Server Data Dictionary Views.......................................................................................... 5-14

Configuring Database Resident Connection Pooling.................................................................... 5-15

Enabling Database Resident Connection Pooling ...................................................................... 5-15

viii

Configuring the Connection Pool for Database Resident Connection Pooling ..................... 5-16

Data Dictionary Views for Database Resident Connection Pooling........................................ 5-18

About Oracle Database Background Processes............................................................................... 5-18

Managing Processes for Parallel SQL Execution ............................................................................ 5-19

About Parallel Execution Servers ................................................................................................. 5-20

Altering Parallel Execution for a Session..................................................................................... 5-20

Managing Processes for External Procedures .................................................................................. 5-21

About External Procedures............................................................................................................ 5-21

DBA Tasks to Enable External Procedure Calls.......................................................................... 5-22

Terminating Sessions ........................................................................................................................... 5-23

Identifying Which Session to Terminate...................................................................................... 5-23

Terminating an Active Session...................................................................................................... 5-24

Terminating an Inactive Session ................................................................................................... 5-25

Process and Session Data Dictionary Views.................................................................................... 5-25

6 Managing Memory

About Memory Management................................................................................................................. 6-1

Memory Architecture Overview............................................................................................................ 6-2

Using Automatic Memory Management ............................................................................................. 6-4

About Automatic Memory Management....................................................................................... 6-4

Enabling Automatic Memory Management .................................................................................. 6-5

Monitoring and Tuning Automatic Memory Management ........................................................ 6-7

Configuring Memory Manually............................................................................................................ 6-8

Using Automatic Shared Memory Management .......................................................................... 6-8

Using Manual Shared Memory Management............................................................................. 6-15

Using Automatic PGA Memory Management ........................................................................... 6-20

Using Manual PGA Memory Management ................................................................................ 6-22

Using Force Full Database Caching Mode ....................................................................................... 6-22

About Force Full Database Caching Mode.................................................................................. 6-22

Before Enabling Force Full Database Caching Mode................................................................. 6-23

Enabling Force Full Database Caching Mode............................................................................. 6-24

Disabling Force Full Database Caching Mode............................................................................ 6-24

Configuring Database Smart Flash Cache........................................................................................ 6-24

When to Configure Database Smart Flash Cache....................................................................... 6-25

Sizing Database Smart Flash Cache.............................................................................................. 6-25

Tuning Memory for Database Smart Flash Cache ..................................................................... 6-25

Database Smart Flash Cache Initialization Parameters ............................................................. 6-26

Database Smart Flash Cache in an Oracle Real Applications Clusters Environment........... 6-27

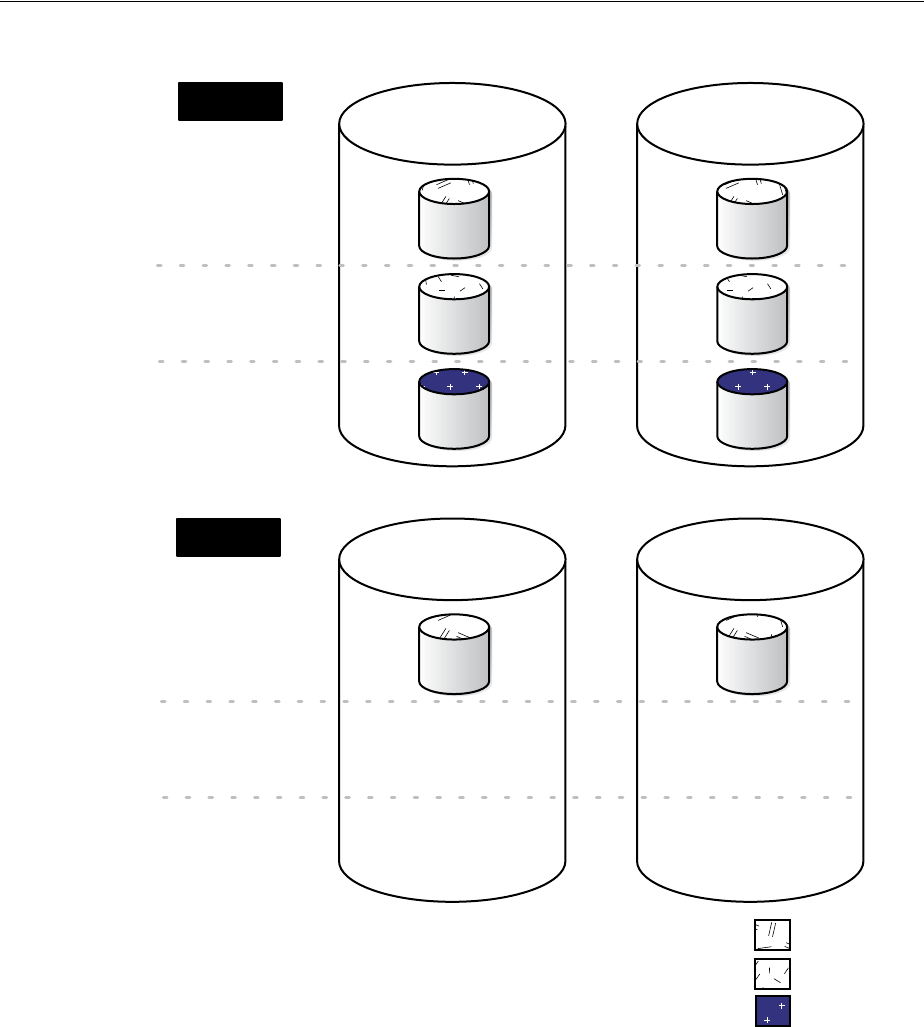

Using the In-Memory Column Store................................................................................................. 6-27

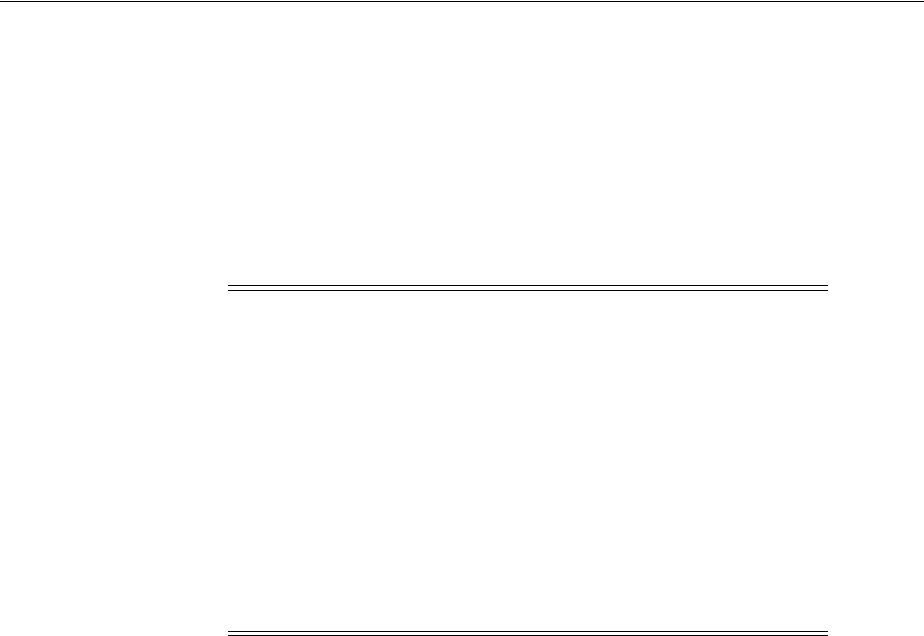

About the IM Column Store .......................................................................................................... 6-27

Initialization Parameters Related to the IM Column Store....................................................... 6-31

Enabling the IM Column Store for a Database ........................................................................... 6-33

Enabling and Disabling Tables for the IM Column Store ......................................................... 6-34

Enabling and Disabling Tablespaces for the IM Column Store ............................................... 6-36

Enabling and Disabling Materialized Views for the IM Column Store .................................. 6-37

Data Pump and the IM Column Store.......................................................................................... 6-38

Using IM Column Store In Enterprise Manager......................................................................... 6-38

ix

Memory Management Reference ....................................................................................................... 6-42

Platforms That Support Automatic Memory Management...................................................... 6-42

Memory Management Data Dictionary Views........................................................................... 6-42

7 Managing Users and Securing the Database

The Importance of Establishing a Security Policy for Your Database........................................... 7-1

Managing Users and Resources............................................................................................................. 7-1

Managing User Privileges and Roles ................................................................................................... 7-2

Auditing Database Activity.................................................................................................................... 7-2

Predefined User Accounts ...................................................................................................................... 7-2

8 Monitoring the Database

Monitoring Errors and Alerts................................................................................................................. 8-1

Monitoring Errors with Trace Files and the Alert Log ................................................................. 8-1

Monitoring a Database with Server-Generated Alerts ................................................................. 8-4

Monitoring Performance......................................................................................................................... 8-7

Monitoring Locks............................................................................................................................... 8-7

Monitoring Wait Events.................................................................................................................... 8-8

Performance Monitoring Data Dictionary Views.......................................................................... 8-8

9 Managing Diagnostic Data

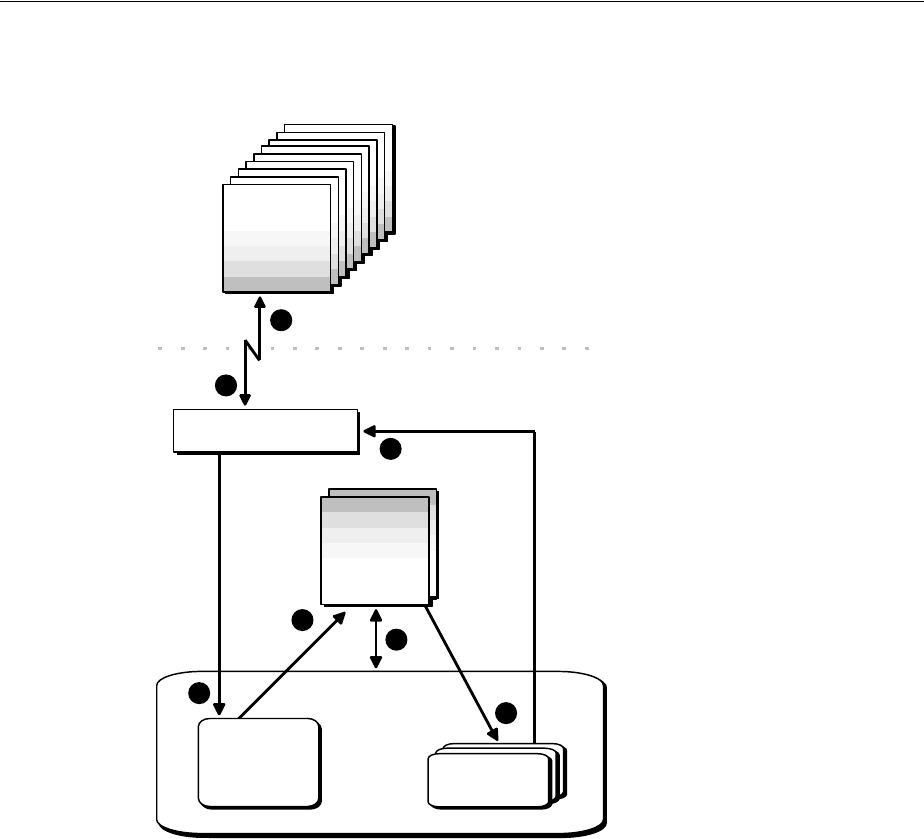

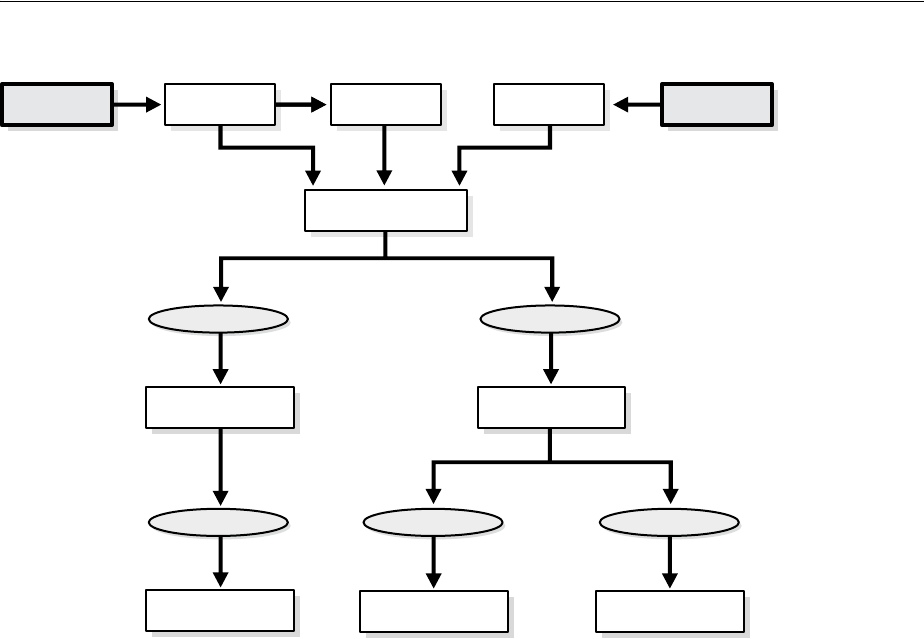

About the Oracle Database Fault Diagnosability Infrastructure.................................................... 9-1

Fault Diagnosability Infrastructure Overview .............................................................................. 9-1

About Incidents and Problems......................................................................................................... 9-3

Fault Diagnosability Infrastructure Components ......................................................................... 9-4

Structure, Contents, and Location of the Automatic Diagnostic Repository ............................ 9-8

Investigating, Reporting, and Resolving a Problem ...................................................................... 9-12

Roadmap—Investigating, Reporting, and Resolving a Problem............................................. 9-12

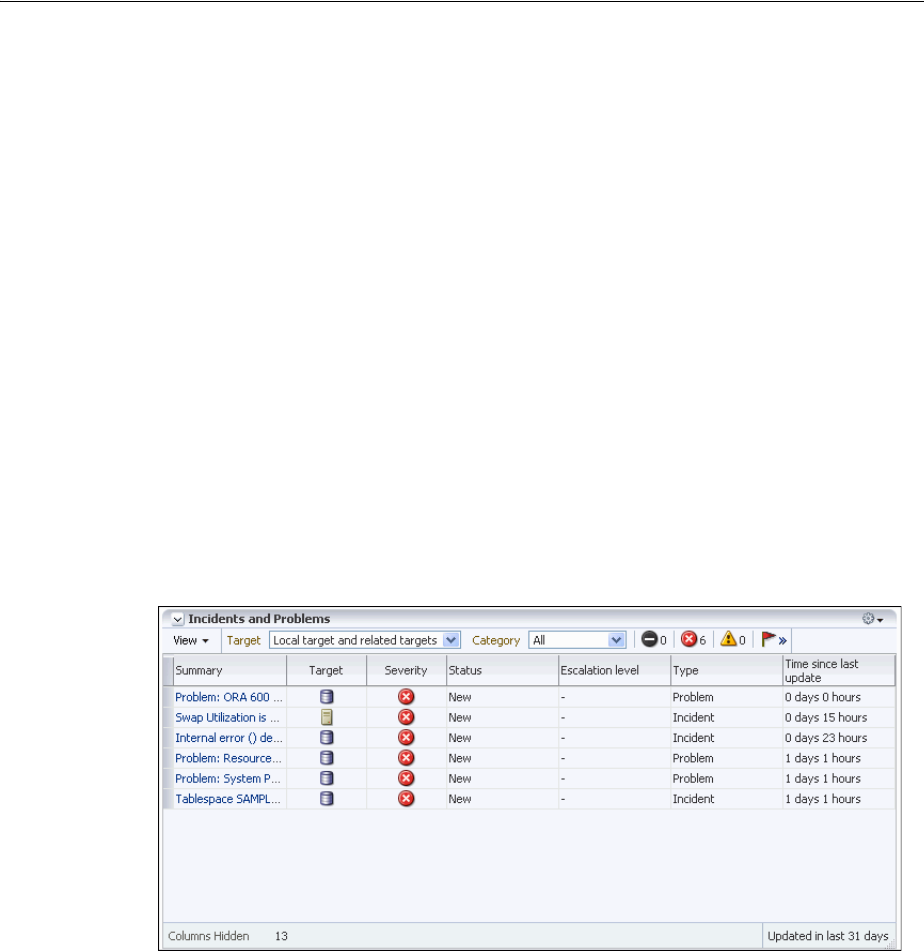

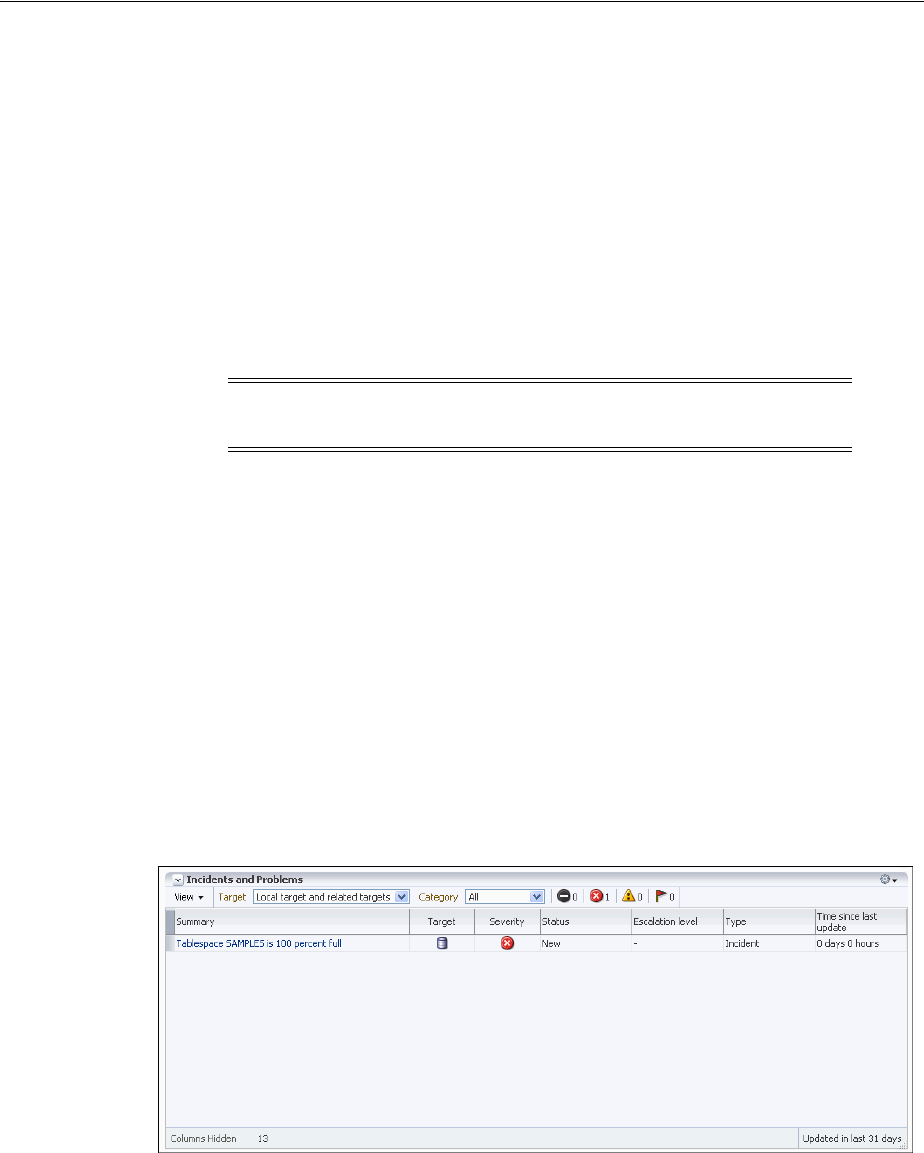

Task 1: View Critical Error Alerts in Cloud Control.................................................................. 9-14

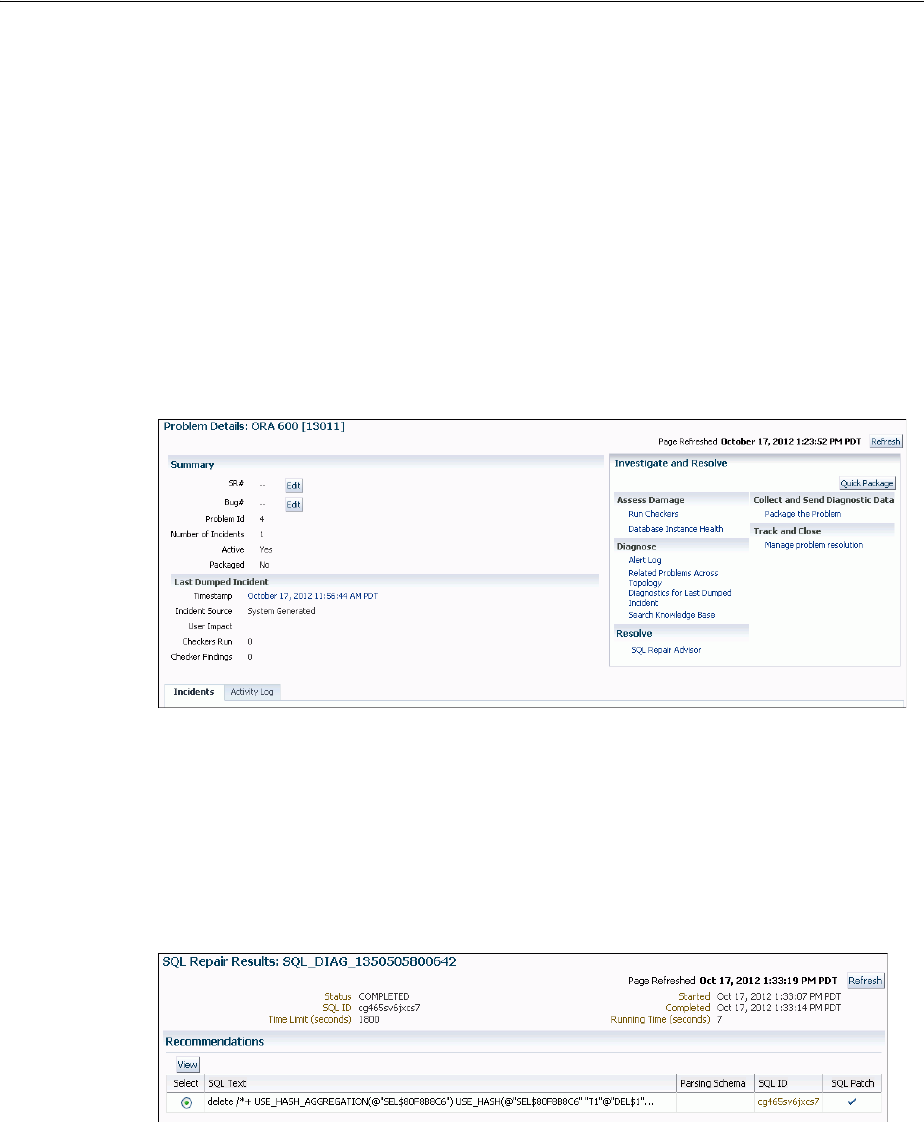

Task 2: View Problem Details........................................................................................................ 9-15

Task 3: (Optional) Gather Additional Diagnostic Information ................................................ 9-16

Task 4: (Optional) Create a Service Request................................................................................ 9-16

Task 5: Package and Upload Diagnostic Data to Oracle Support............................................ 9-16

Task 6: Track the Service Request and Implement Any Repairs.............................................. 9-18

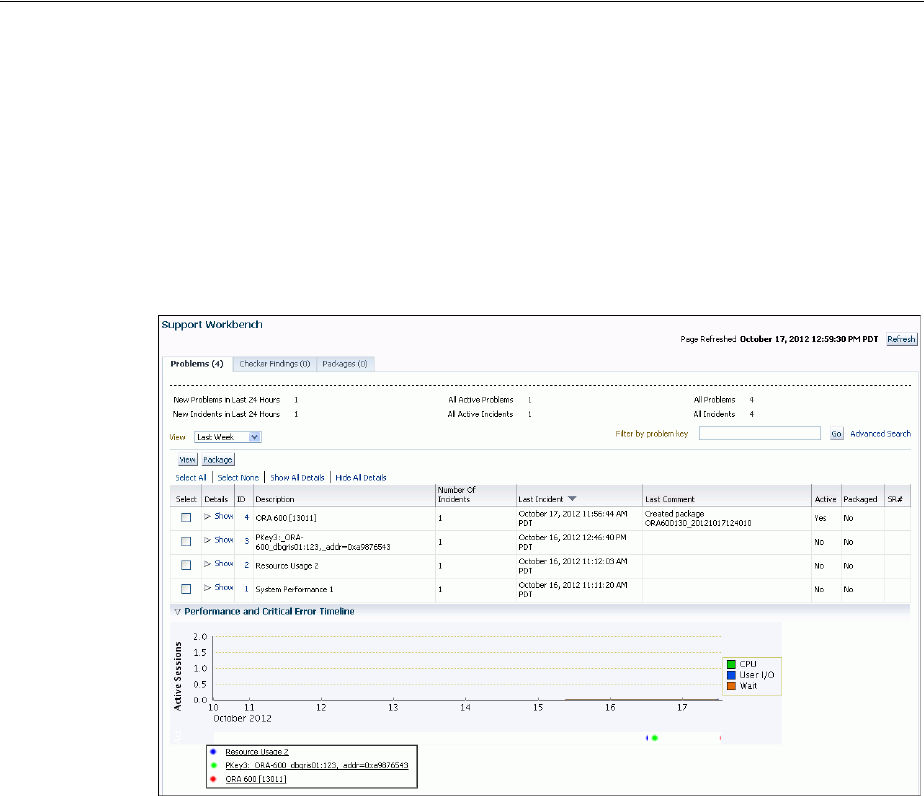

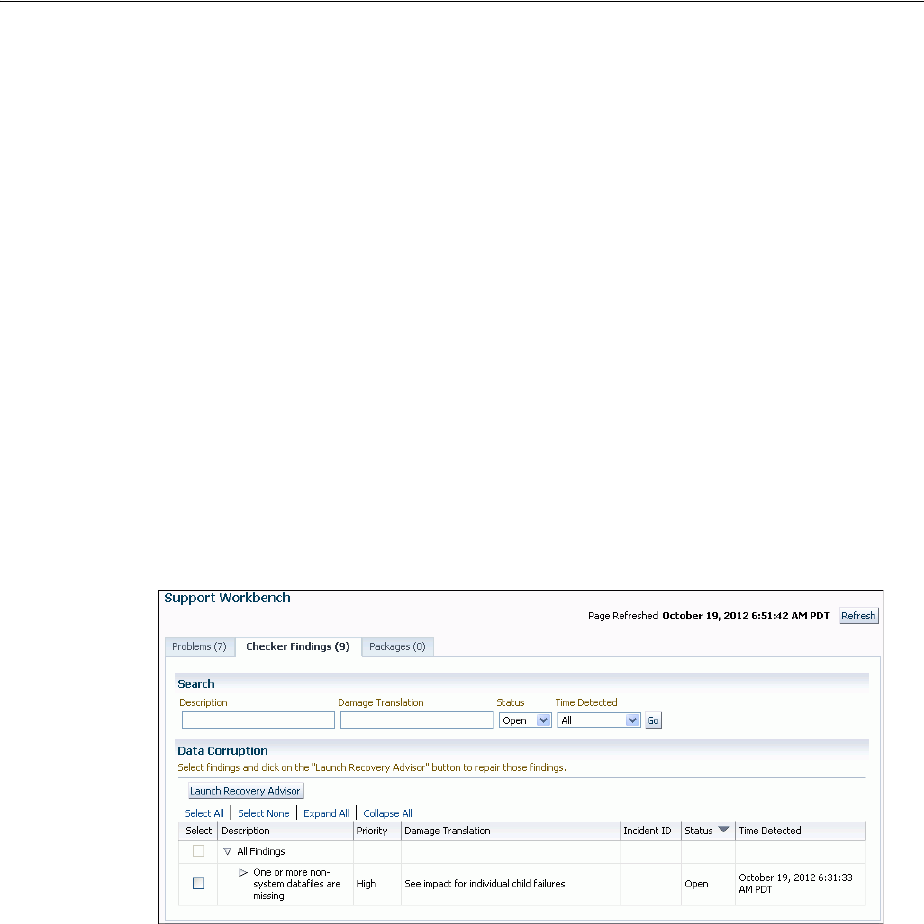

Viewing Problems with the Support Workbench .......................................................................... 9-19

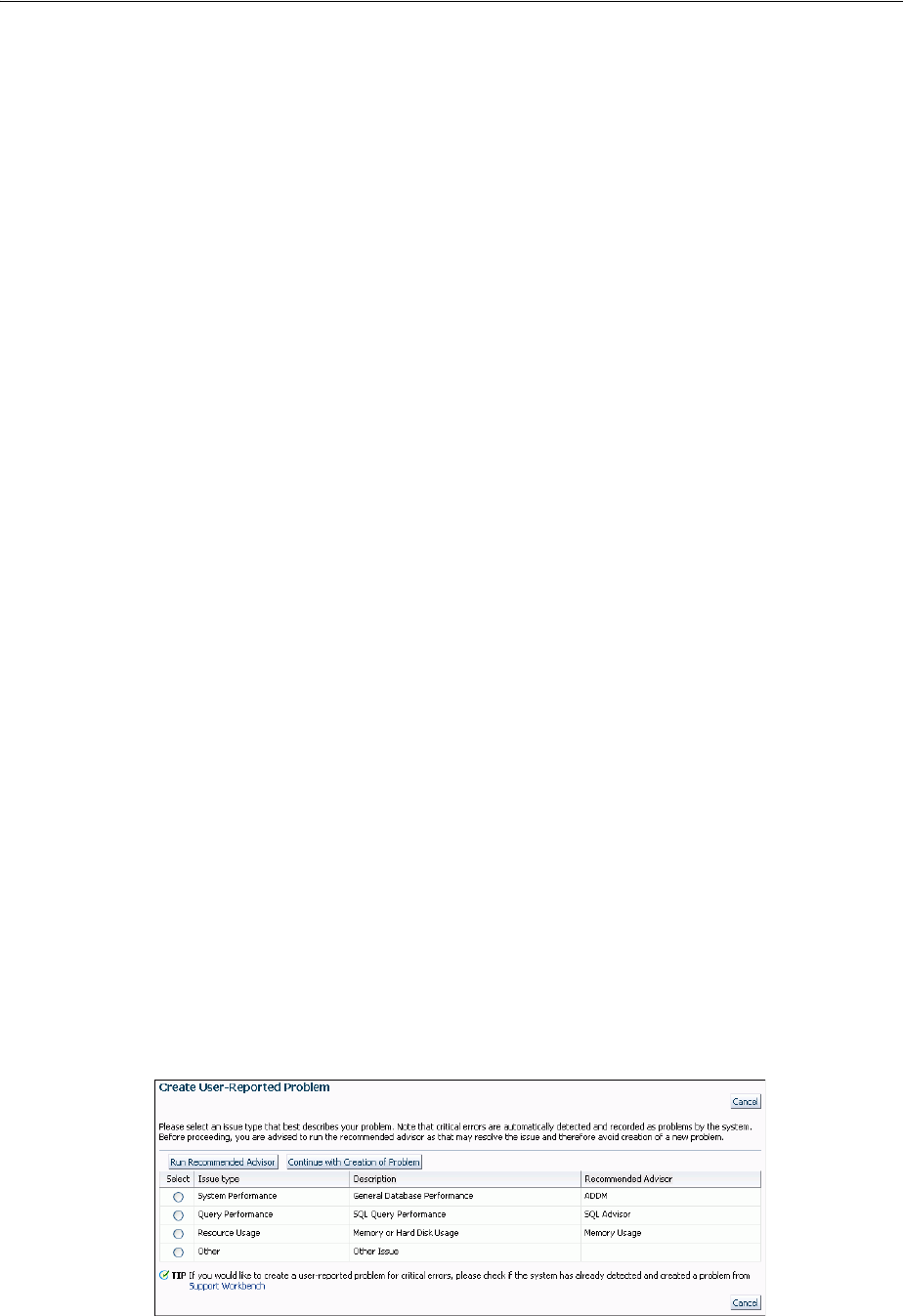

Creating a User-Reported Problem.................................................................................................... 9-20

Viewing the Alert Log .......................................................................................................................... 9-21

Finding Trace Files................................................................................................................................ 9-22

Running Health Checks with Health Monitor................................................................................ 9-22

About Health Monitor.................................................................................................................... 9-22

Running Health Checks Manually ............................................................................................... 9-24

Viewing Checker Reports .............................................................................................................. 9-25

Health Monitor Views .................................................................................................................... 9-28

Health Check Parameters Reference ............................................................................................ 9-28

Repairing SQL Failures with the SQL Repair Advisor ................................................................. 9-29

About the SQL Repair Advisor..................................................................................................... 9-29

x

Running the SQL Repair Advisor................................................................................................. 9-30

Viewing, Disabling, or Removing a SQL Patch.......................................................................... 9-31

Repairing Data Corruptions with the Data Recovery Advisor .................................................... 9-31

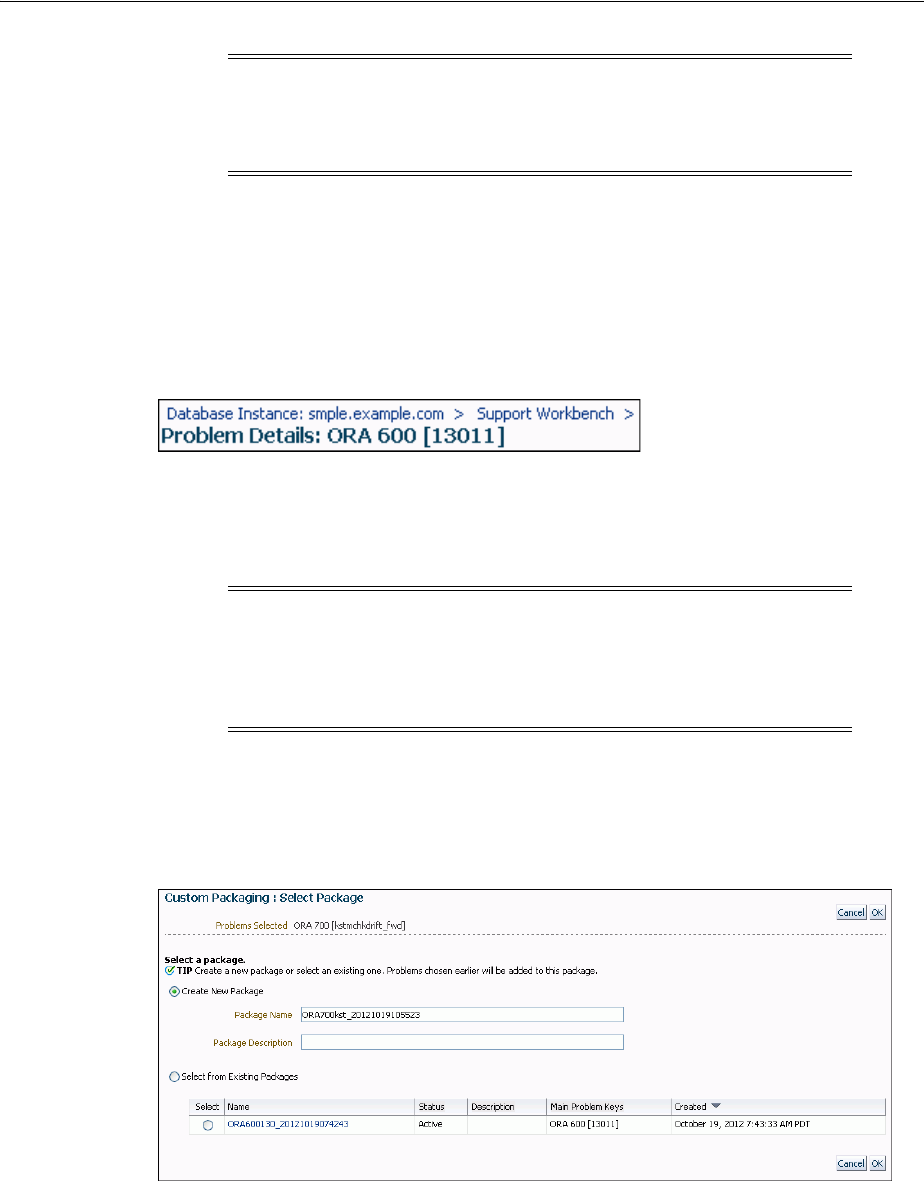

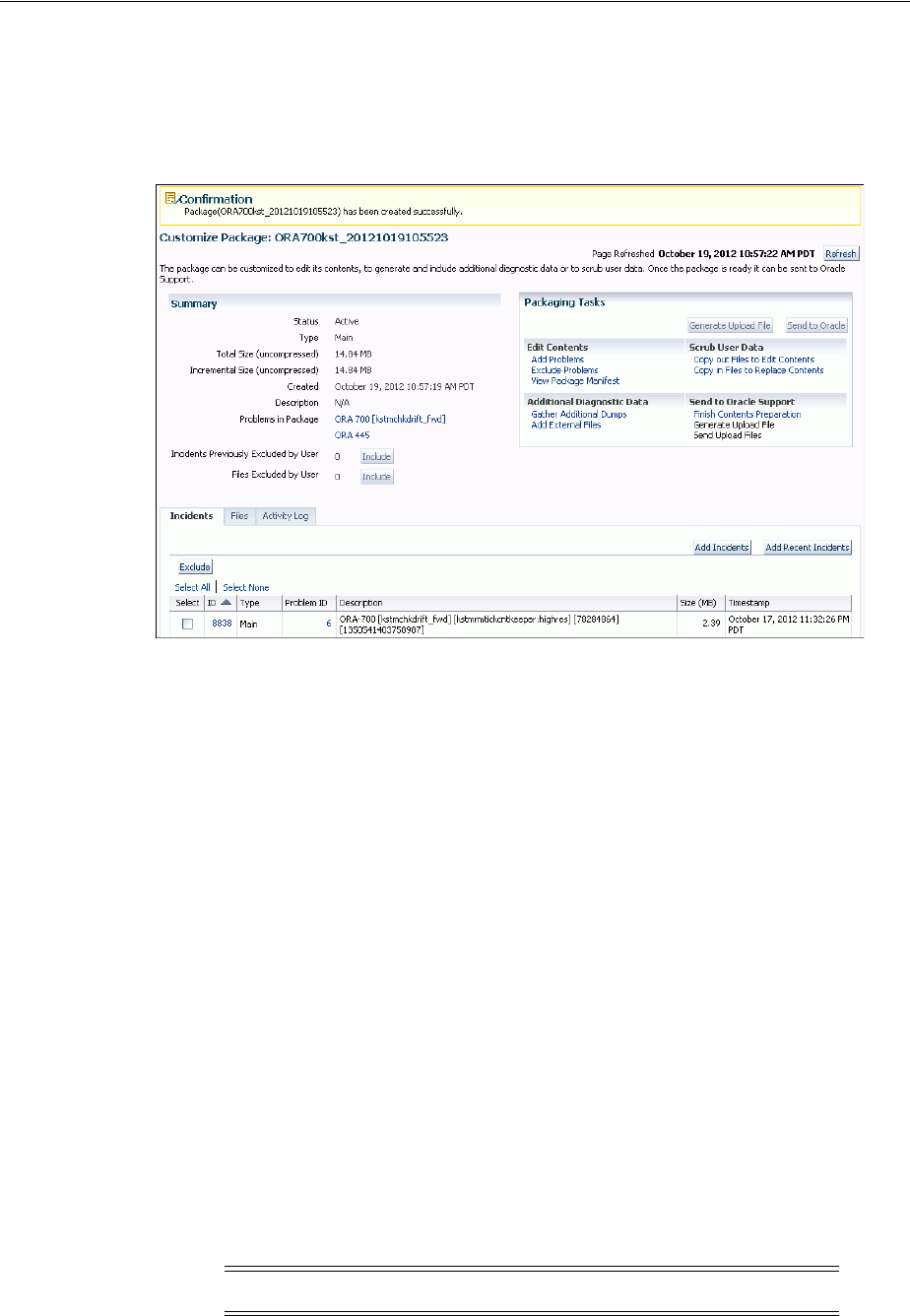

Creating, Editing, and Uploading Custom Incident Packages..................................................... 9-32

About Incident Packages................................................................................................................ 9-33

Packaging and Uploading Problems with Custom Packaging ................................................ 9-35

Viewing and Modifying Incident Packages ................................................................................ 9-39

Creating, Editing, and Uploading Correlated Packages ........................................................... 9-43

Deleting Correlated Packages ....................................................................................................... 9-44

Setting Incident Packaging Preferences....................................................................................... 9-44

Part II Oracle Database Structure and Storage

10 Managing Control Files

What Is a Control File? ......................................................................................................................... 10-1

Guidelines for Control Files ............................................................................................................... 10-2

Provide Filenames for the Control Files ...................................................................................... 10-2

Multiplex Control Files on Different Disks ................................................................................. 10-2

Back Up Control Files..................................................................................................................... 10-3

Manage the Size of Control Files .................................................................................................. 10-3

Creating Control Files .......................................................................................................................... 10-3

Creating Initial Control Files ......................................................................................................... 10-3

Creating Additional Copies, Renaming, and Relocating Control Files ................................. 10-4

Creating New Control Files........................................................................................................... 10-4

Troubleshooting After Creating Control Files ................................................................................ 10-7

Checking for Missing or Extra Files ............................................................................................. 10-7

Handling Errors During CREATE CONTROLFILE .................................................................. 10-7

Backing Up Control Files..................................................................................................................... 10-7

Recovering a Control File Using a Current Copy ........................................................................... 10-8

Recovering from Control File Corruption Using a Control File Copy.................................... 10-8

Recovering from Permanent Media Failure Using a Control File Copy................................. 10-8

Dropping Control Files ........................................................................................................................ 10-9

Control Files Data Dictionary Views ................................................................................................ 10-9

11 Managing the Redo Log

What Is the Redo Log?.......................................................................................................................... 11-1

Redo Threads................................................................................................................................... 11-1

Redo Log Contents.......................................................................................................................... 11-2

How Oracle Database Writes to the Redo Log ........................................................................... 11-2

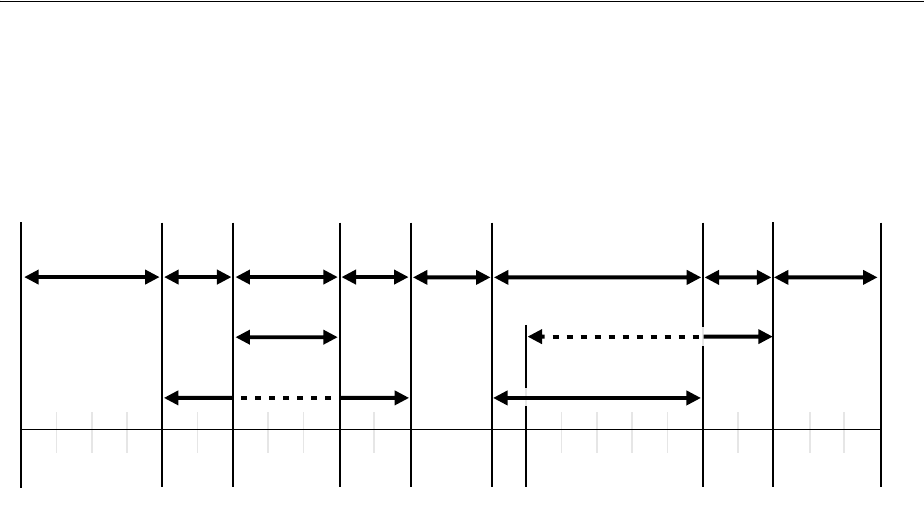

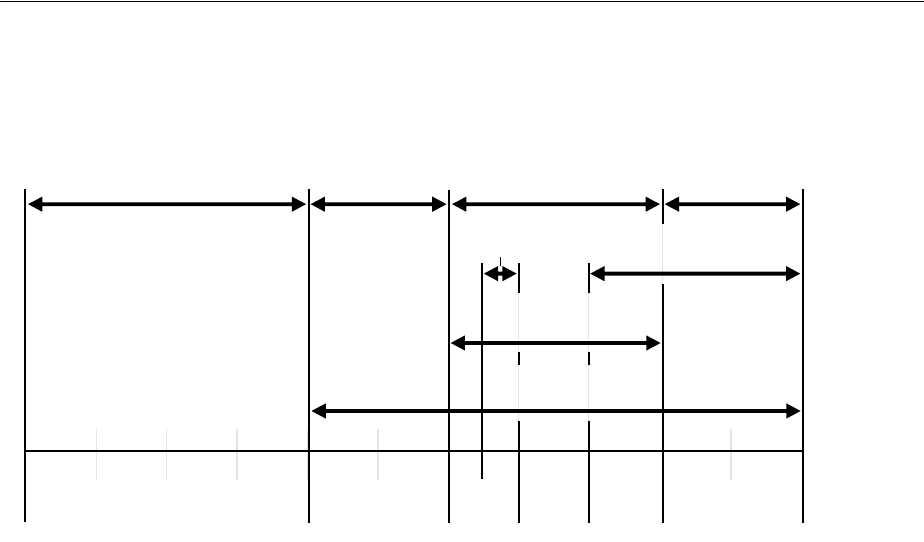

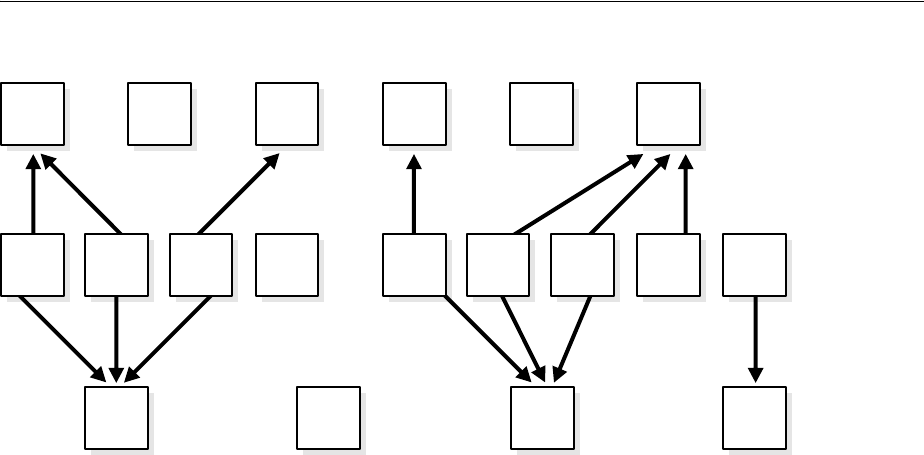

Planning the Redo Log......................................................................................................................... 11-4

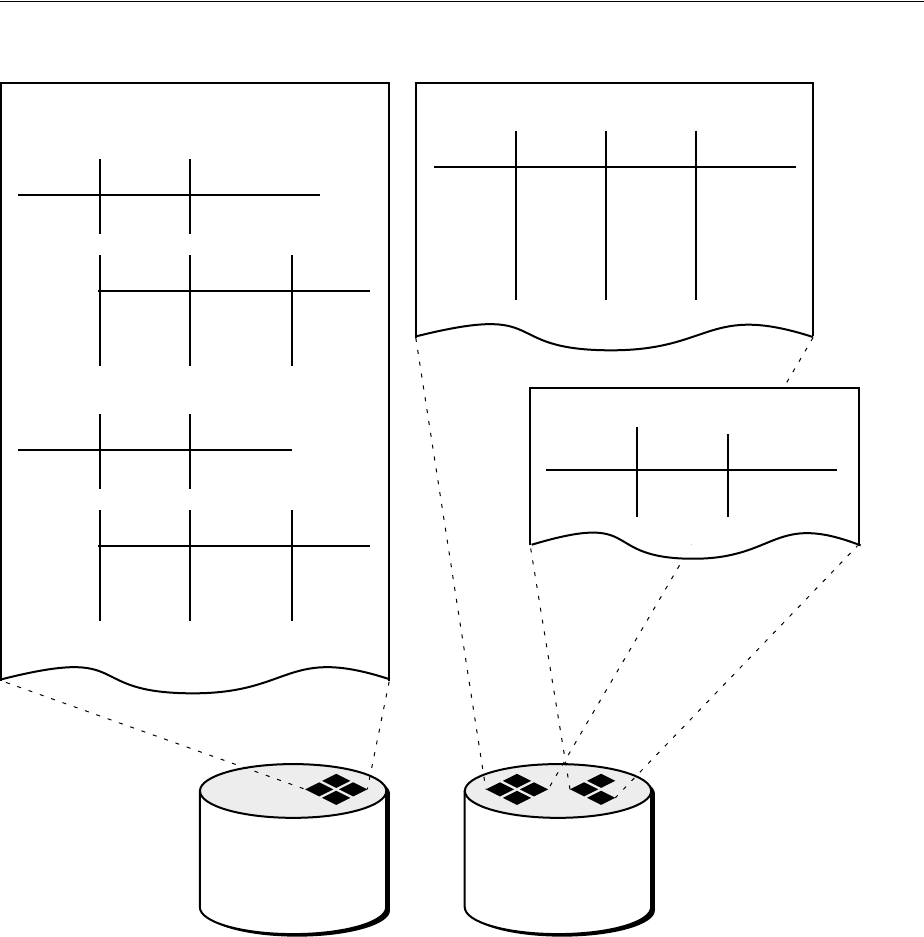

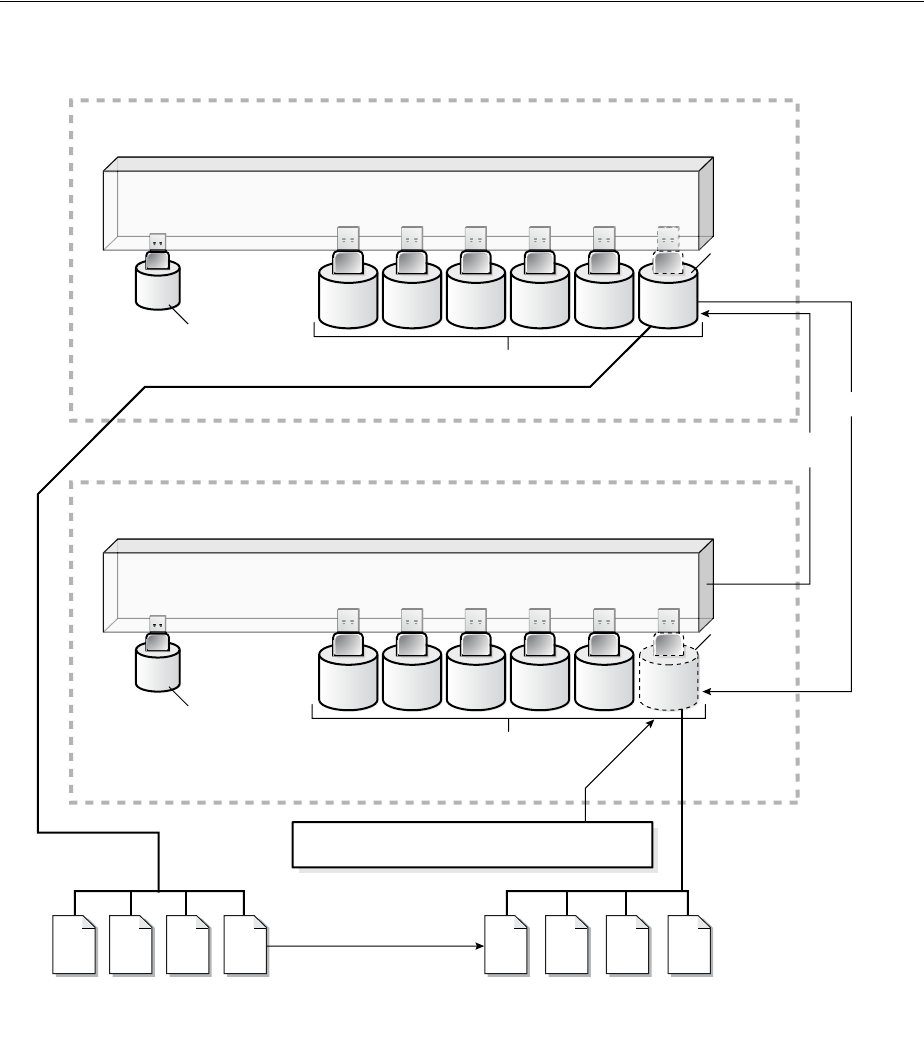

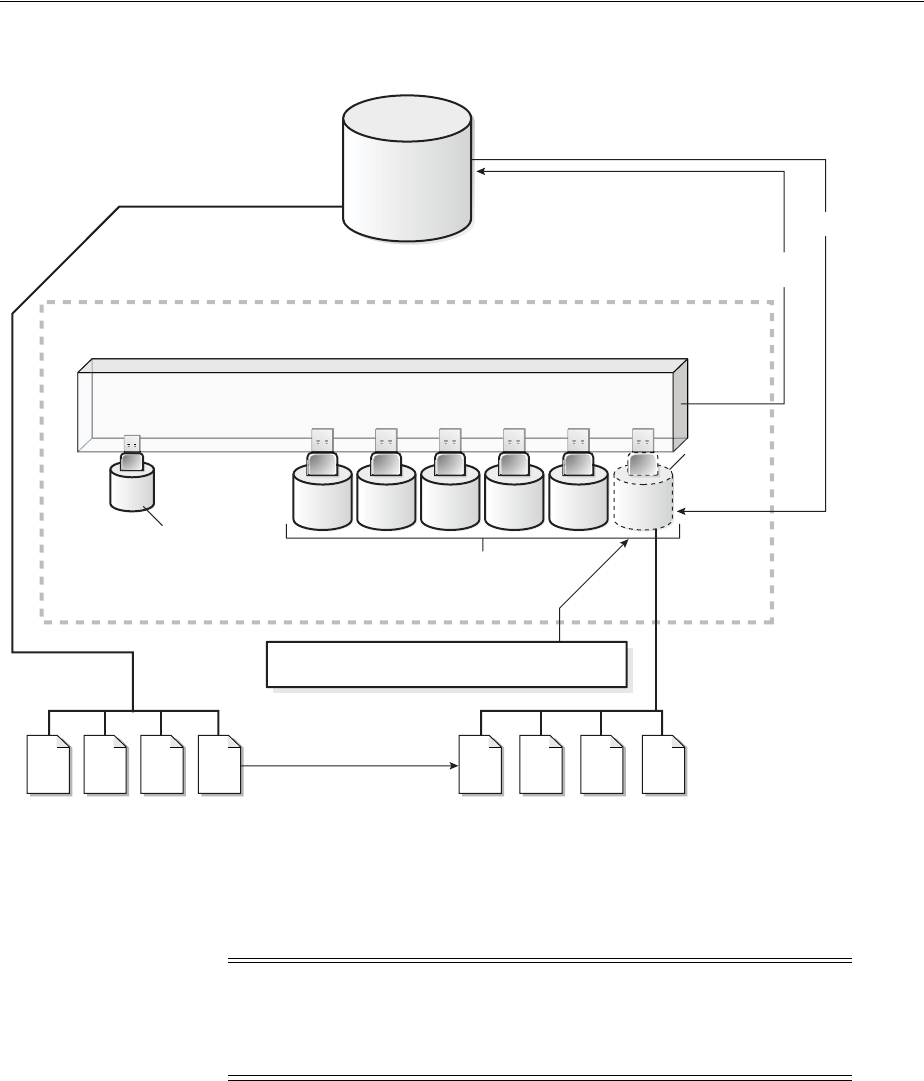

Multiplexing Redo Log Files ......................................................................................................... 11-4

Placing Redo Log Members on Different Disks.......................................................................... 11-6

Planning the Size of Redo Log Files ............................................................................................. 11-7

Planning the Block Size of Redo Log Files .................................................................................. 11-7

Choosing the Number of Redo Log Files .................................................................................... 11-8