Para View Manual.v4.1

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 433 [warning: Documents this large are best viewed by clicking the View PDF Link!]

Version 4.0

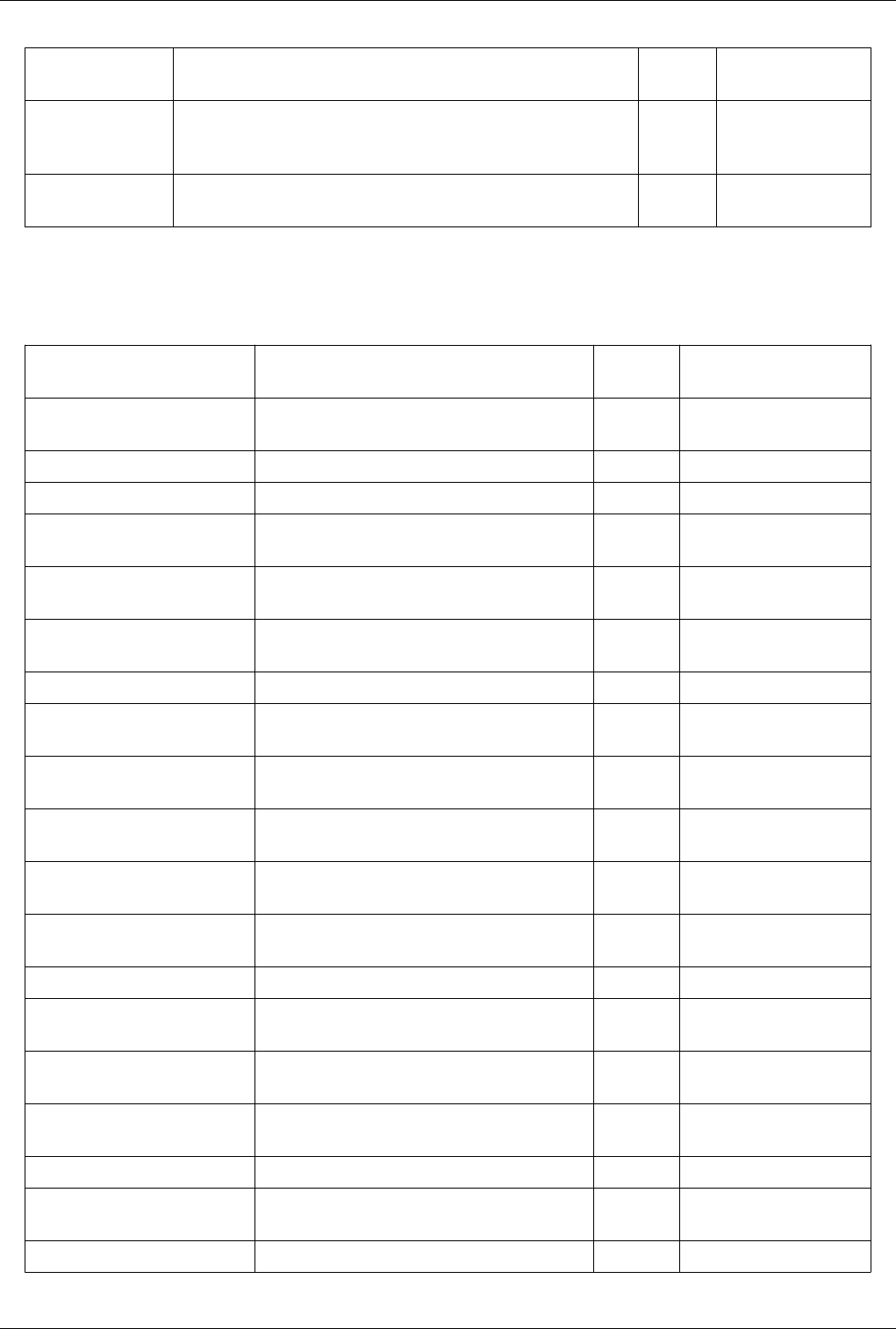

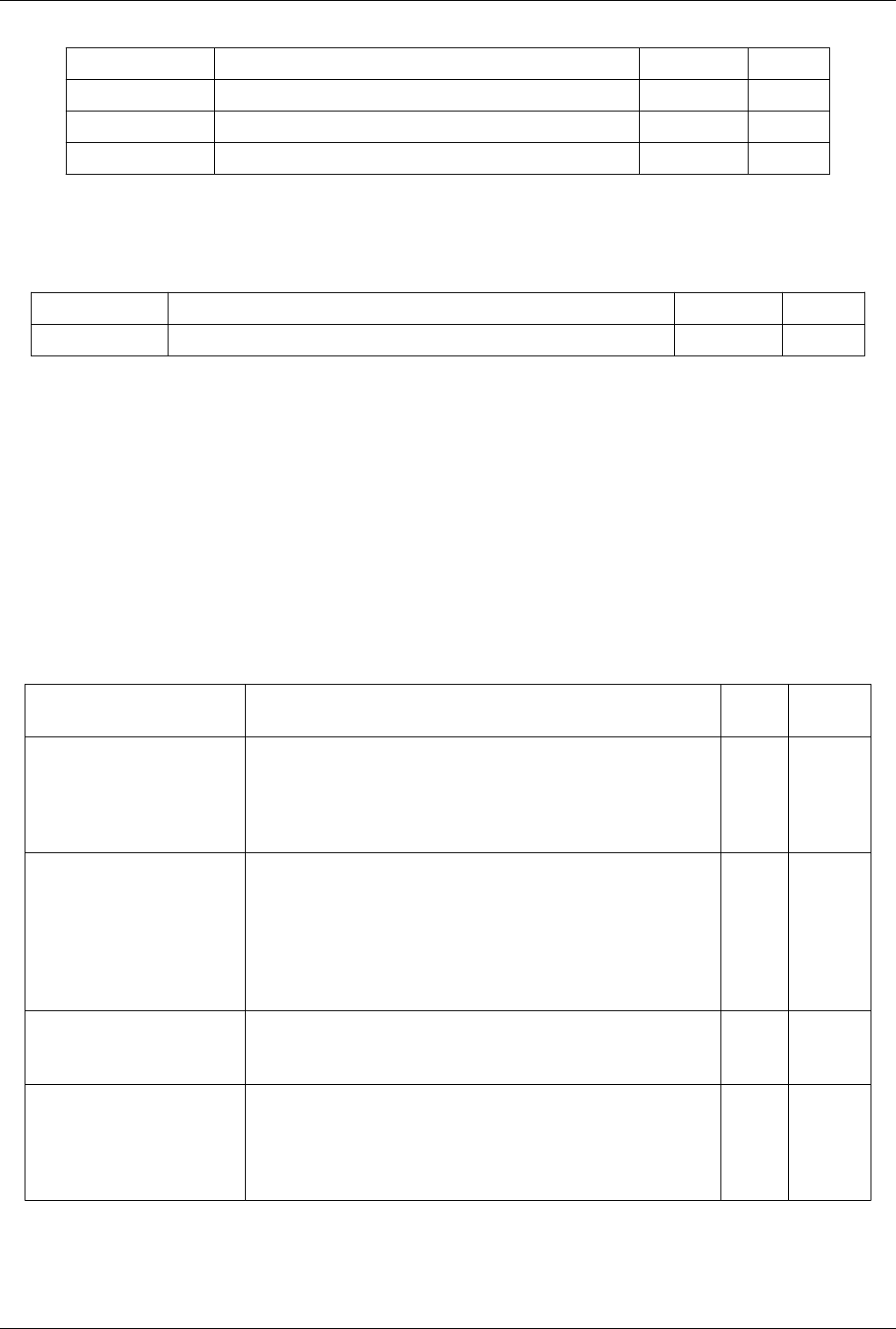

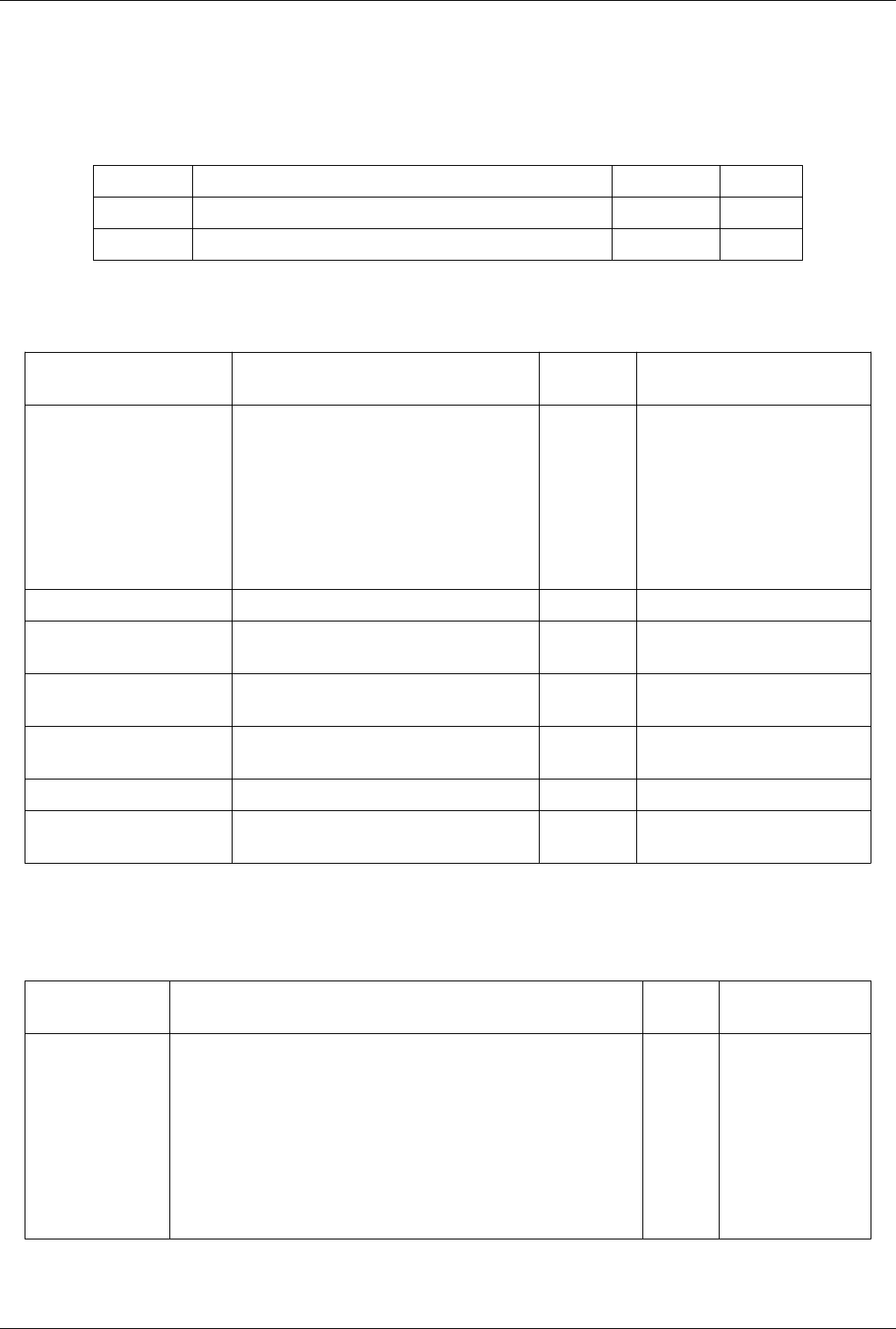

Contents

Articles

Introduction 1

About Paraview 1

Loading Data 9

Data Ingestion 9

Understanding Data 13

VTK Data Model 13

Information Panel 23

Statistics Inspector 27

Memory Inspector 28

Multi-block Inspector 32

Displaying Data 35

Views, Representations and Color Mapping 35

Filtering Data 70

Rationale 70

Filter Parameters 70

The Pipeline 72

Filter Categories 76

Best Practices 79

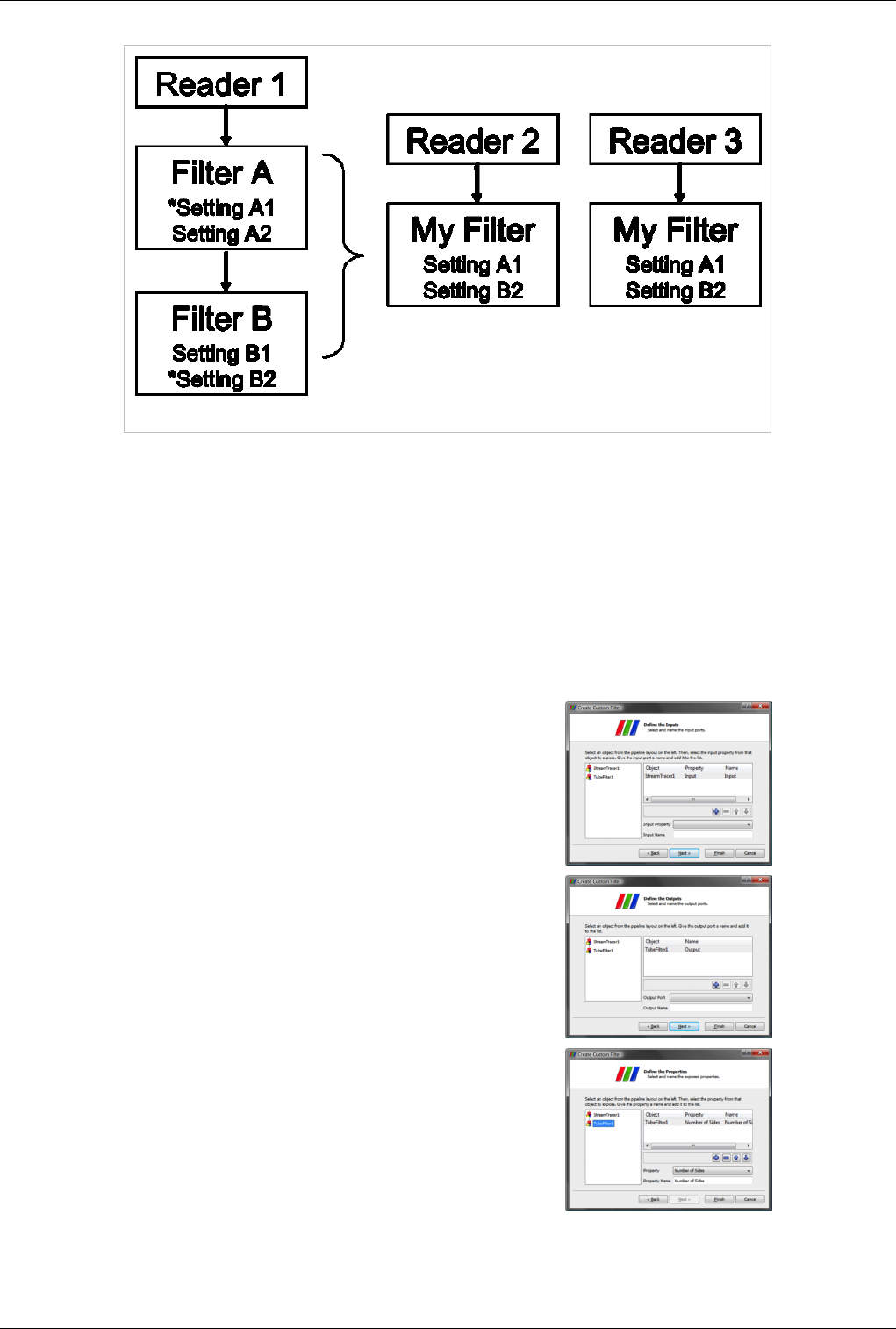

Custom Filters aka Macro Filters 82

Quantative Analysis 85

Drilling Down 85

Python Programmable Filter 85

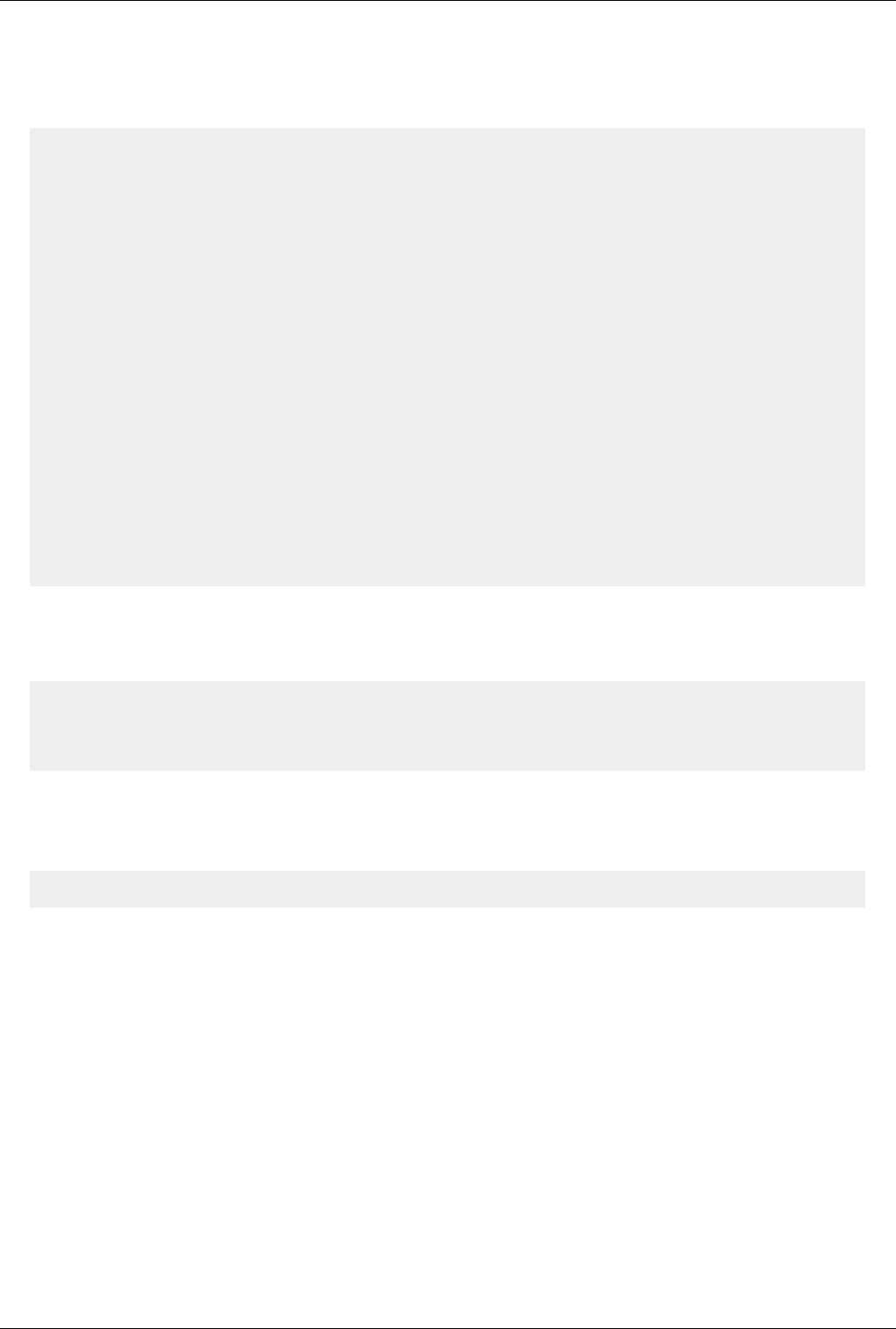

Calculator 92

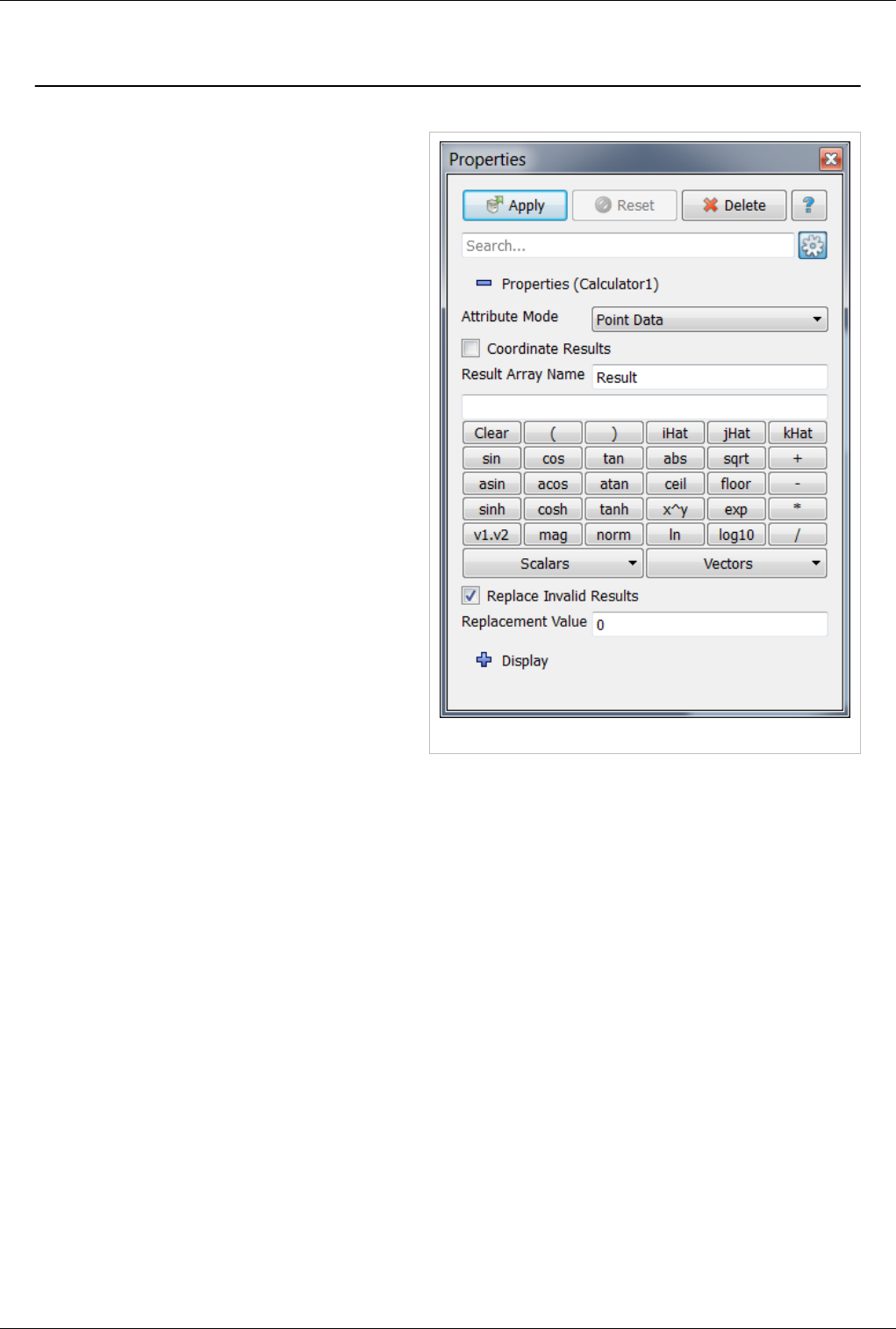

Python Calculator 94

Spreadsheet View 99

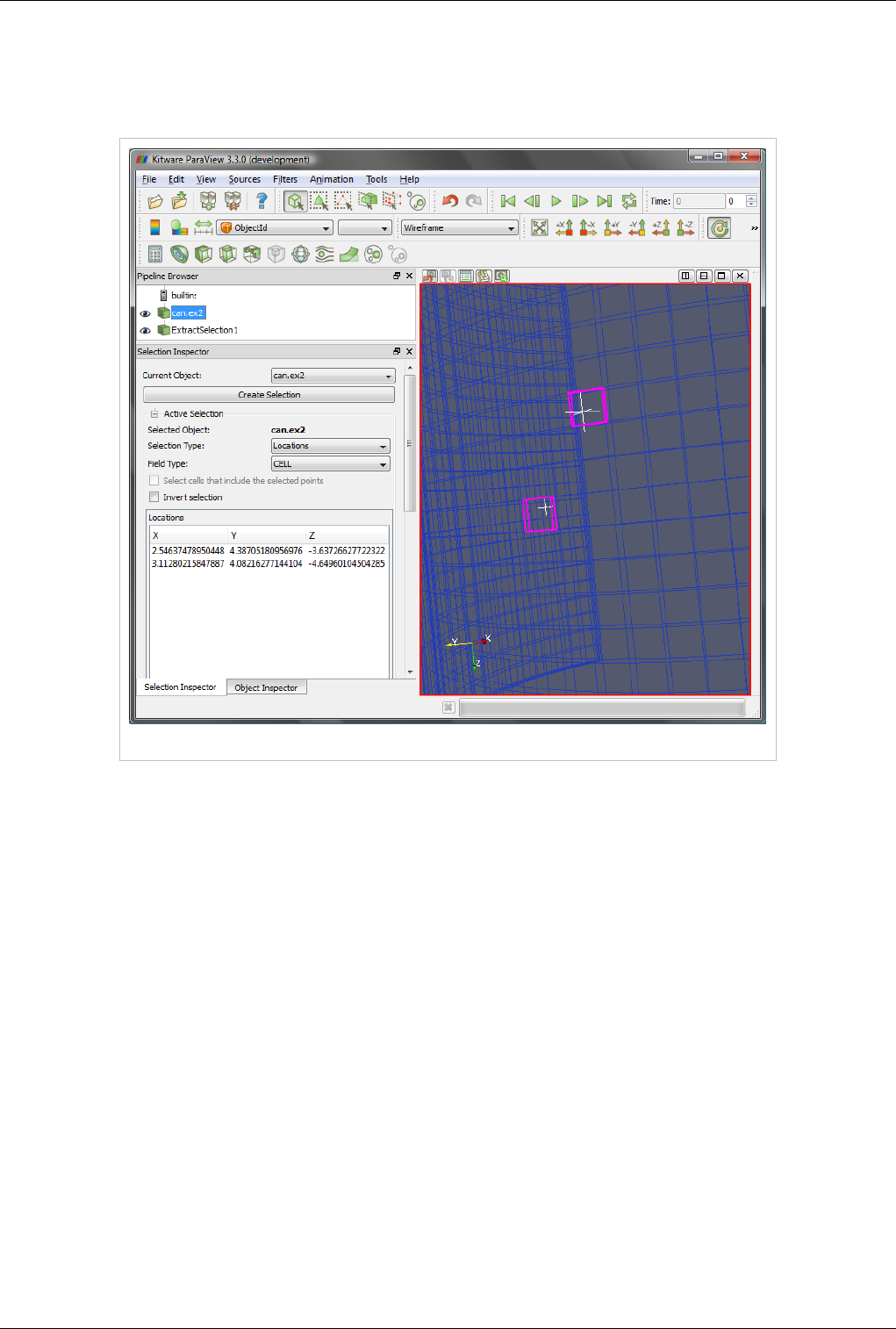

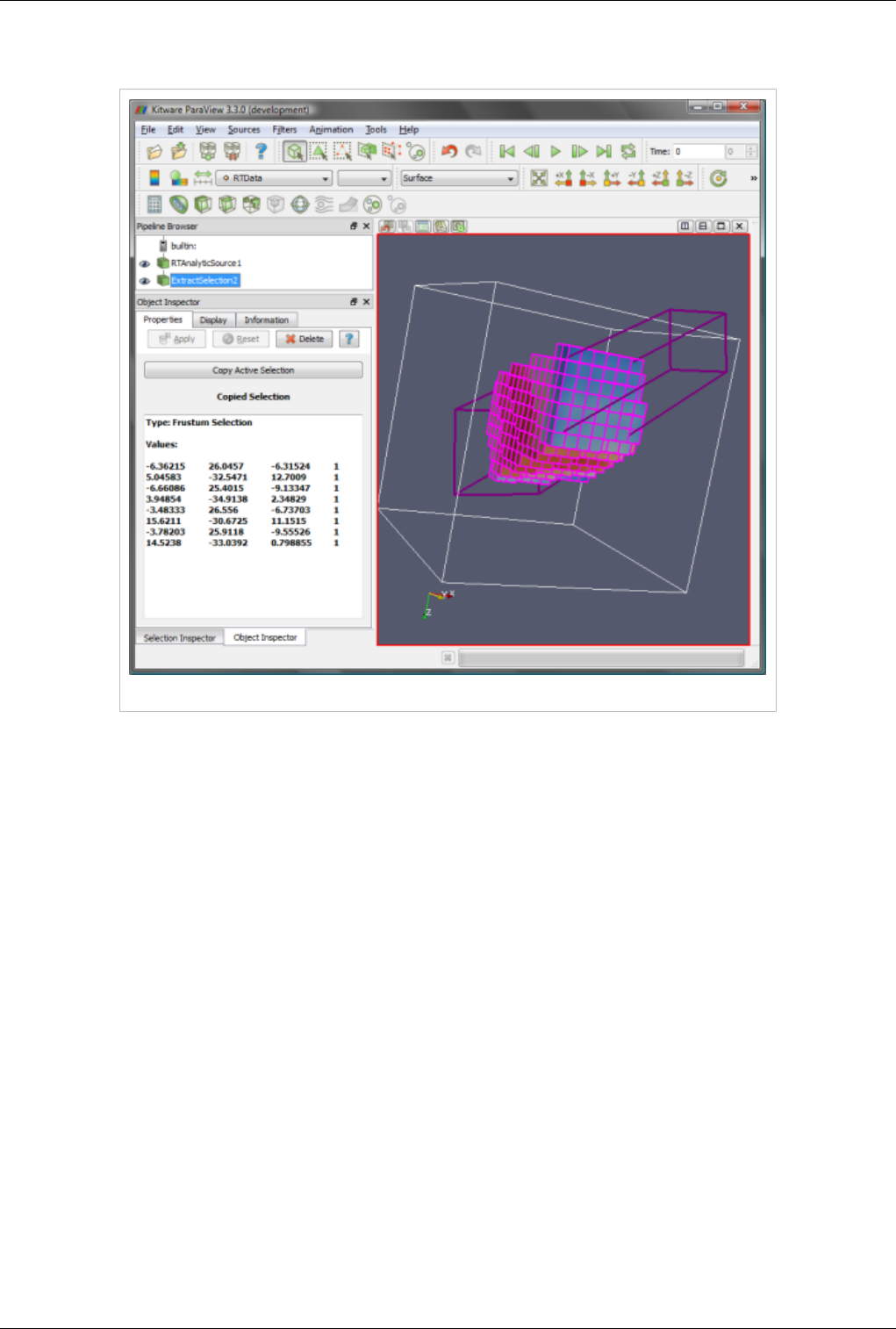

Selection 102

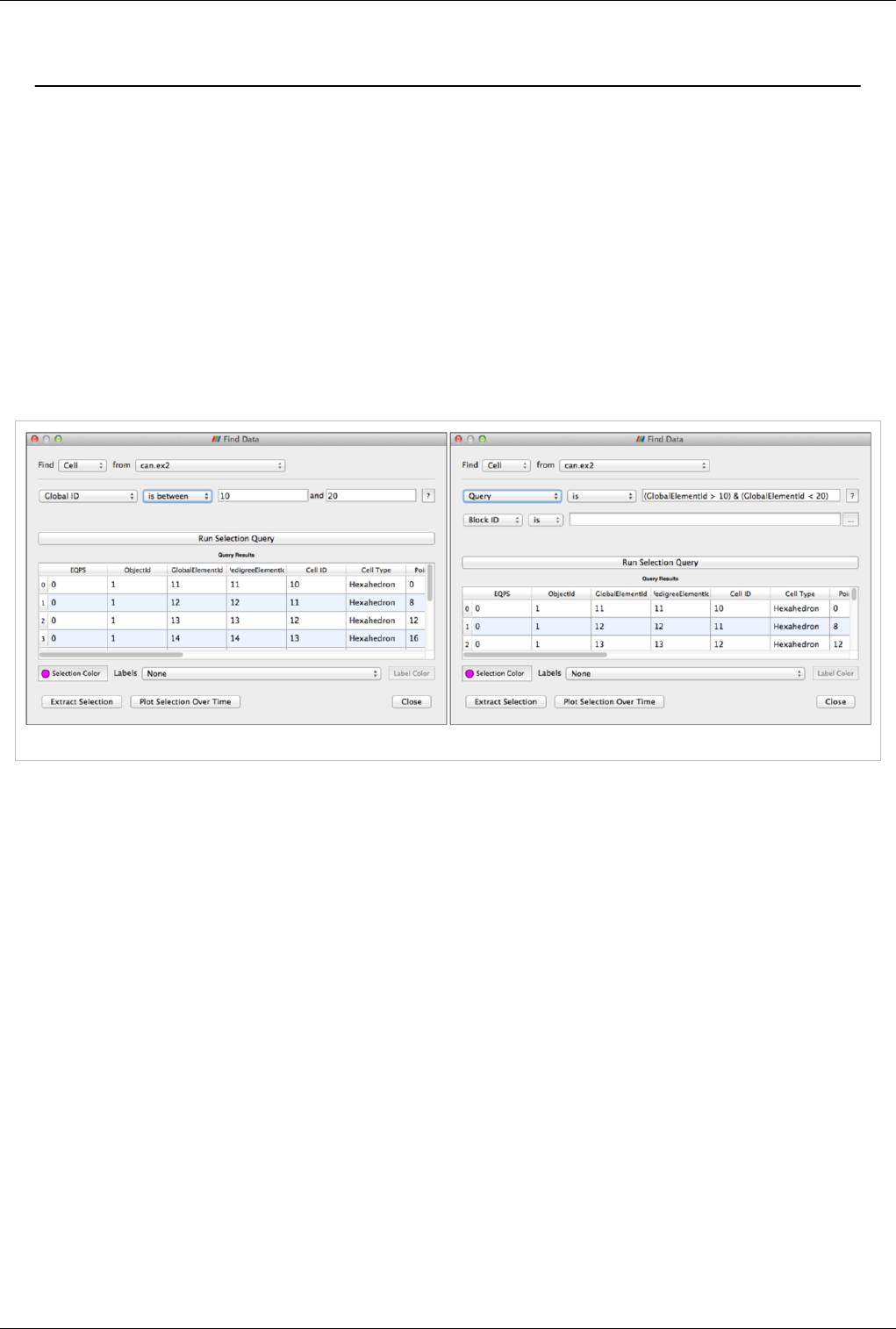

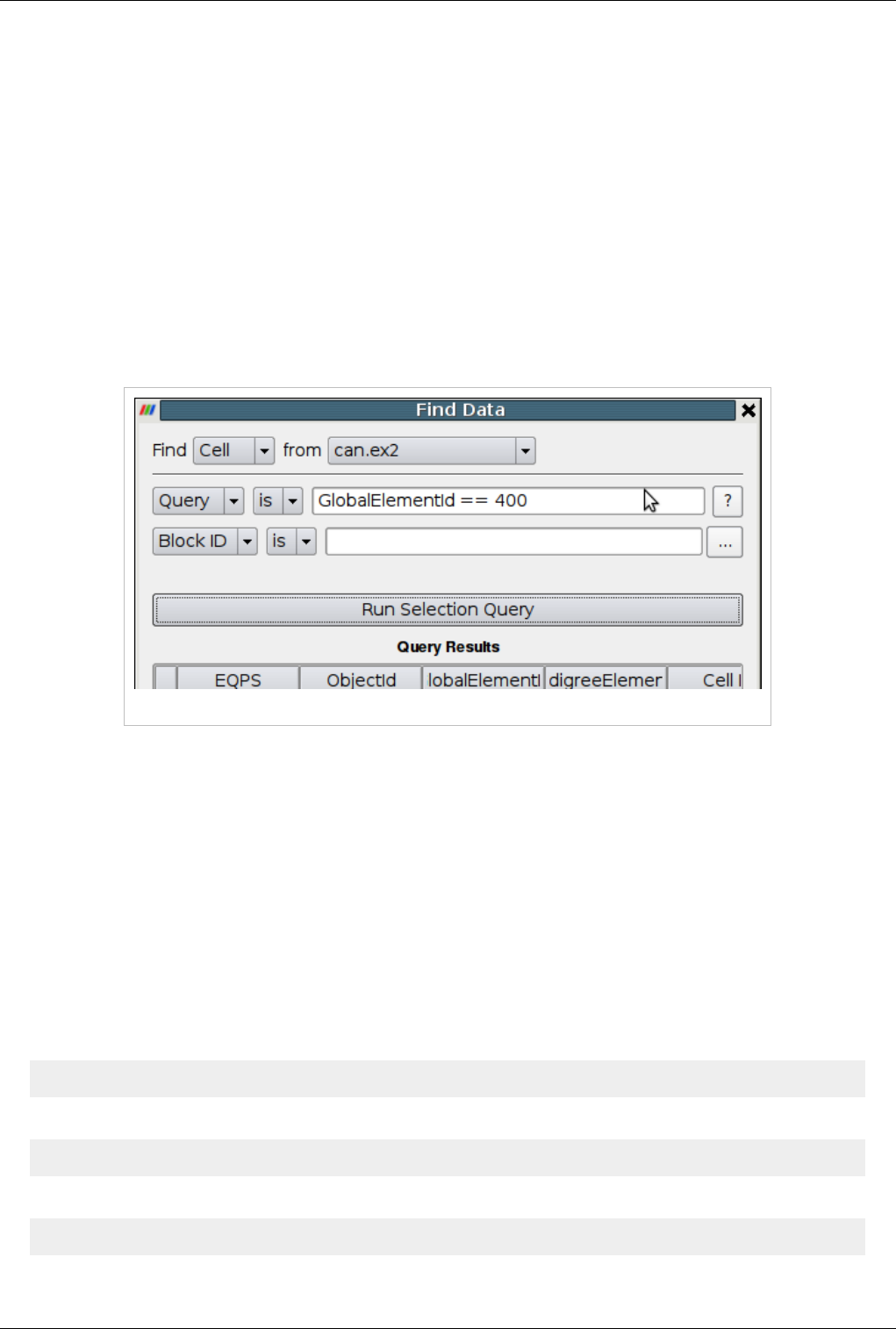

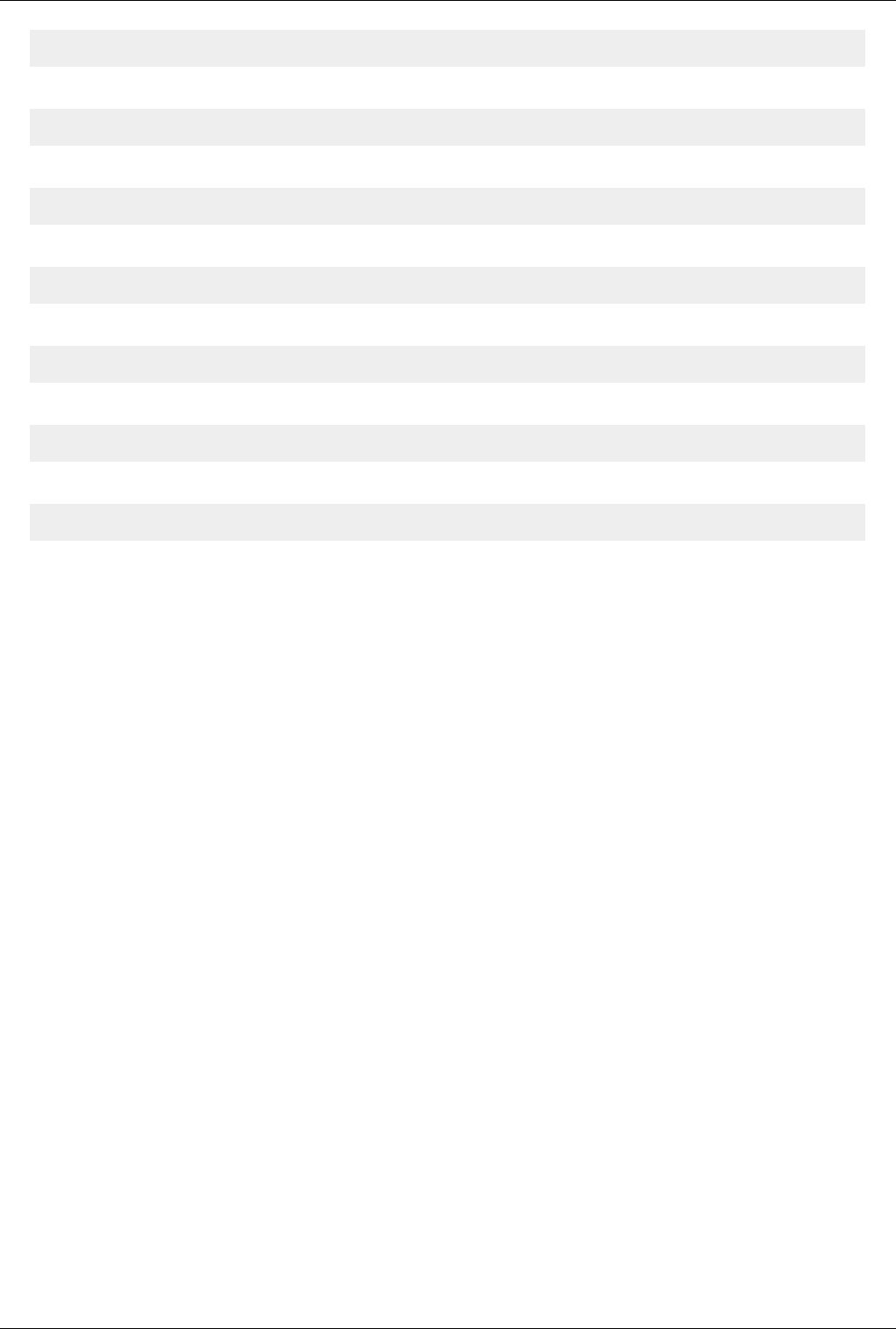

Querying for Data 111

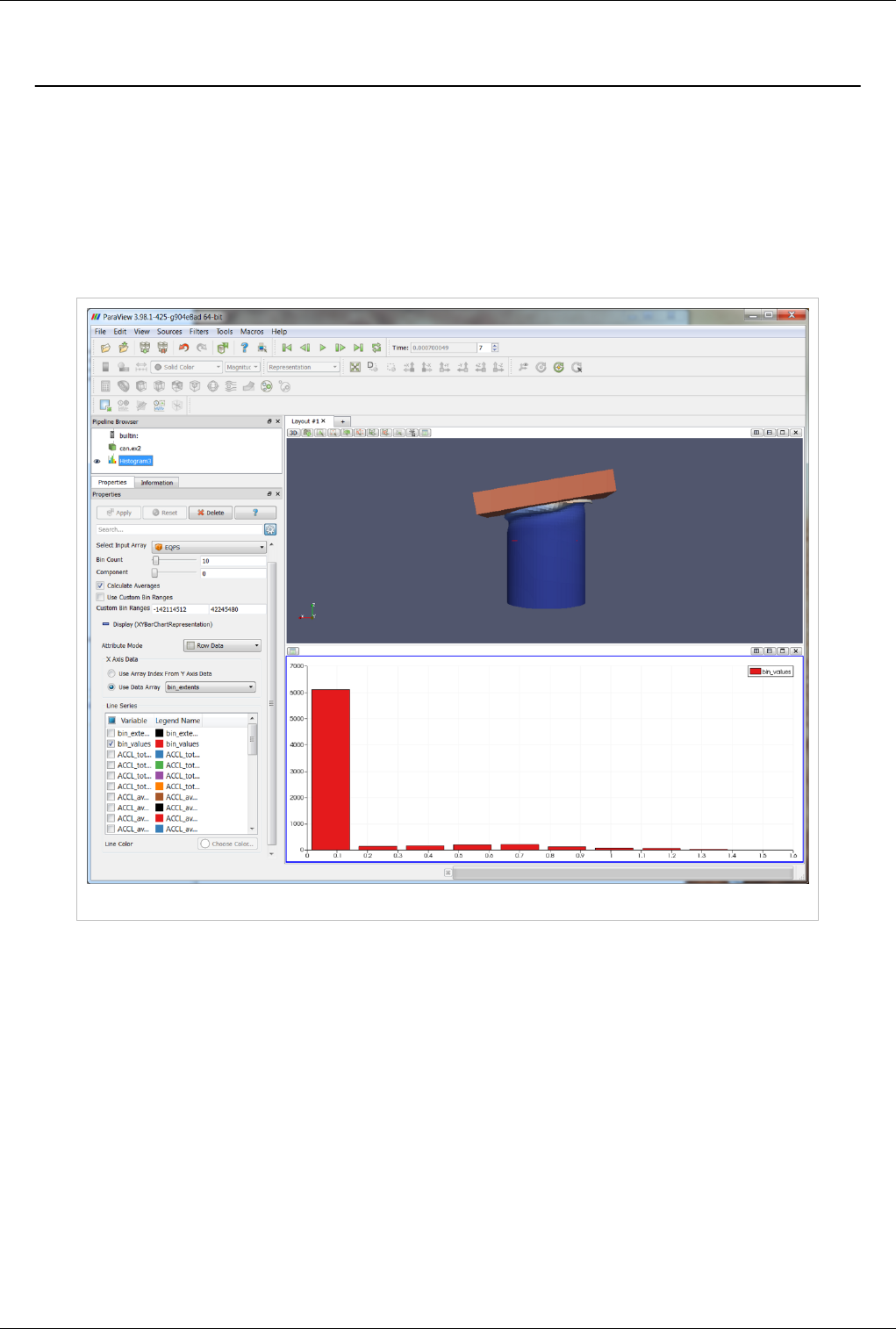

Histogram 115

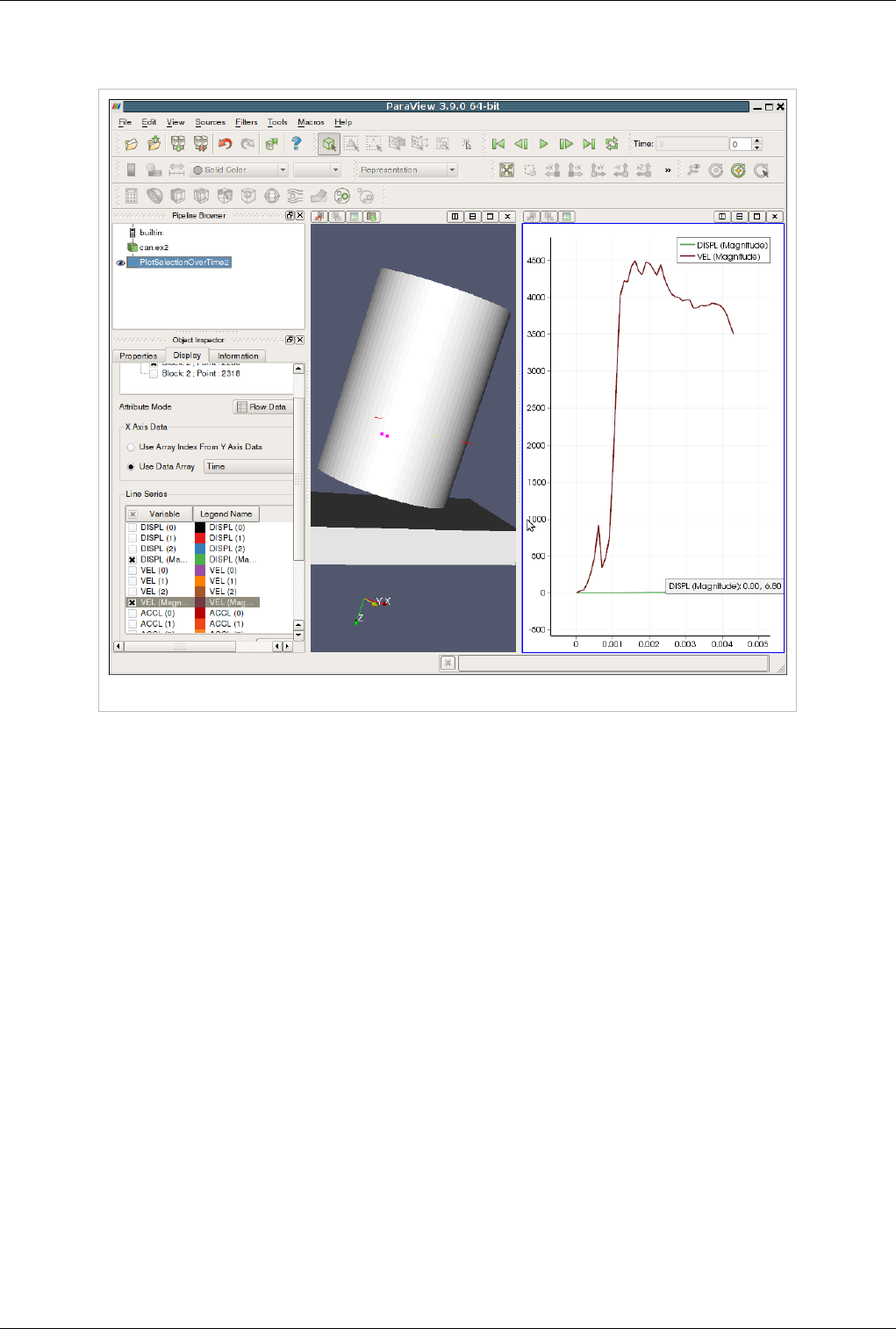

Plotting and Probing Data 116

Saving Data 118

Saving Data 118

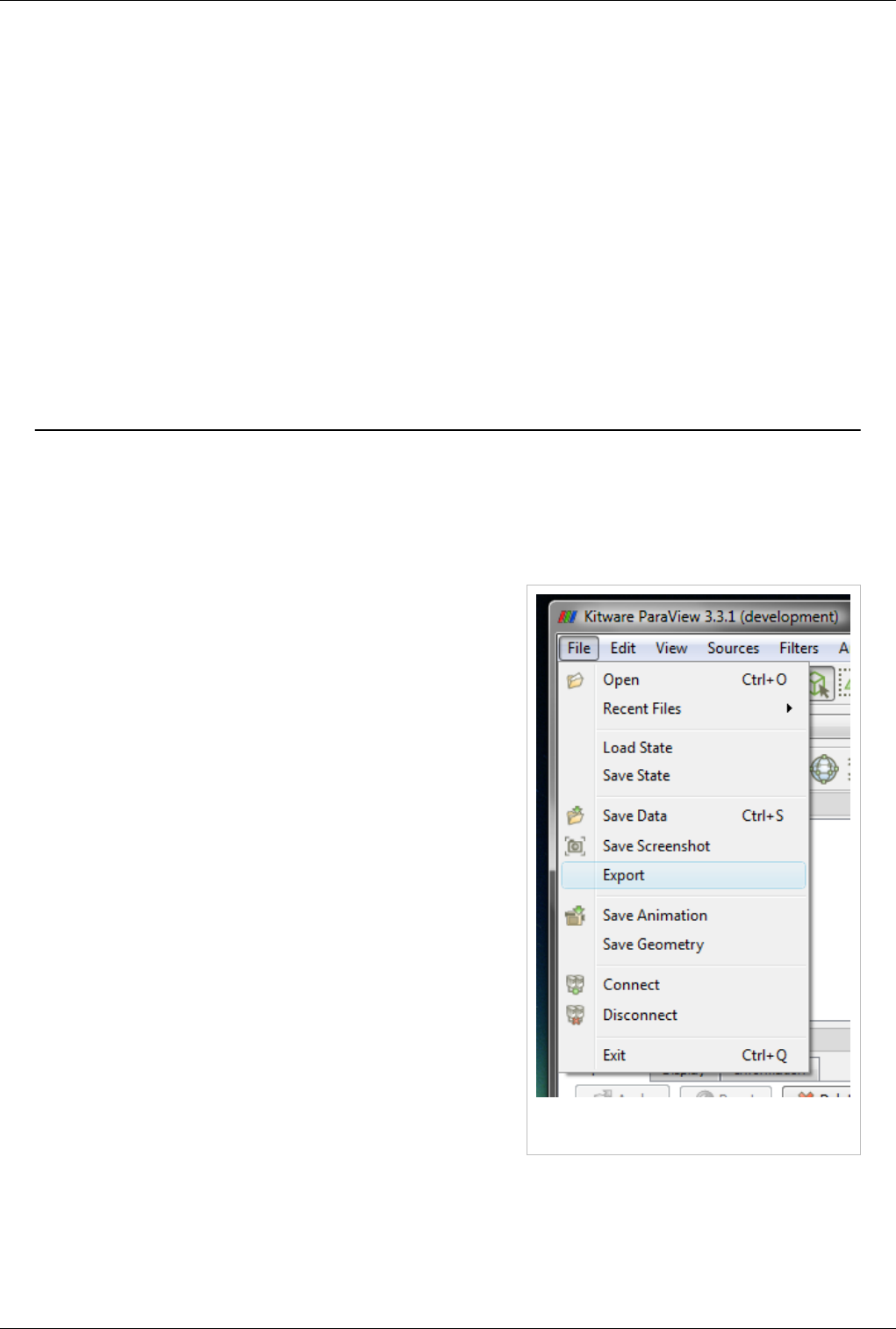

Exporting Scenes 121

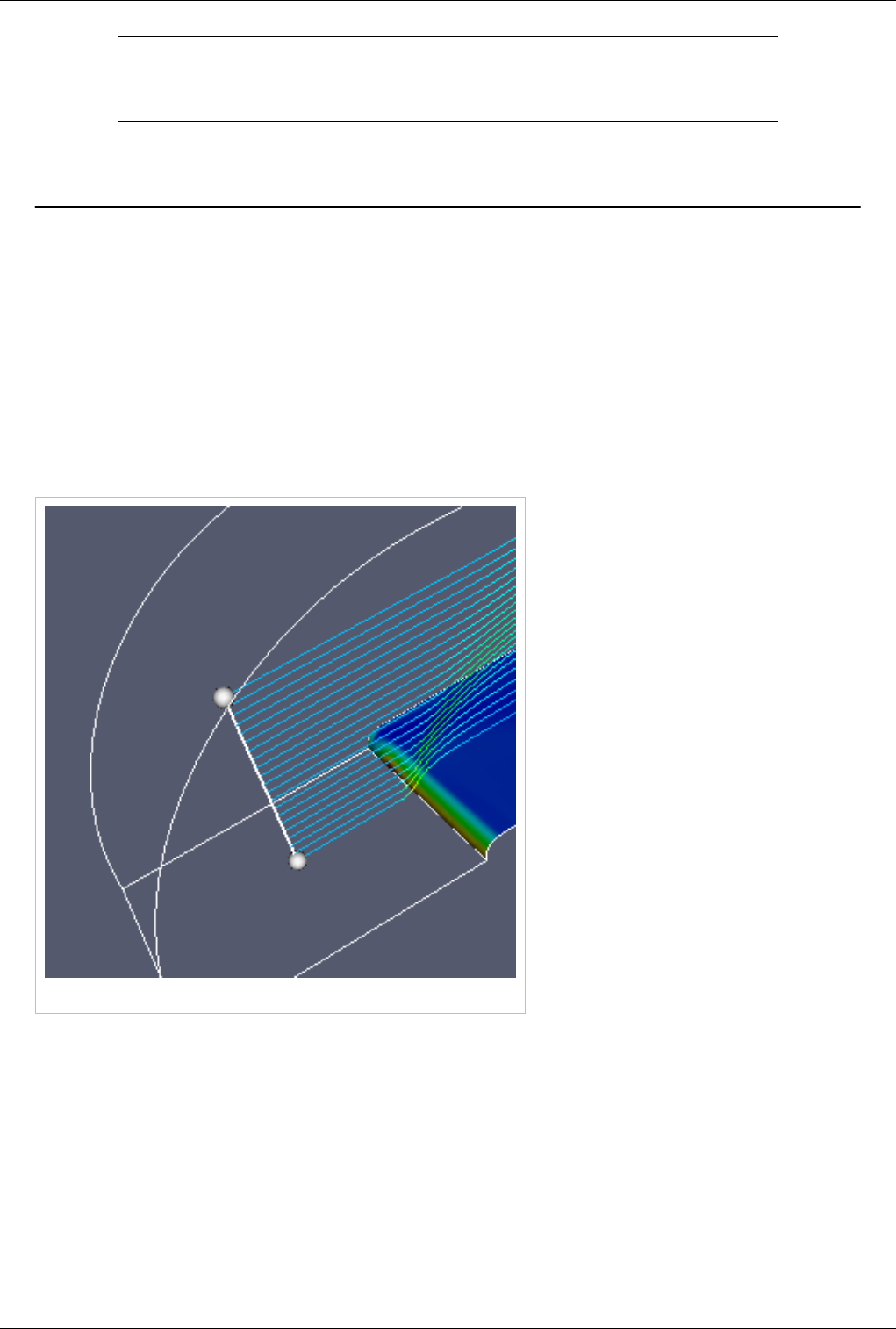

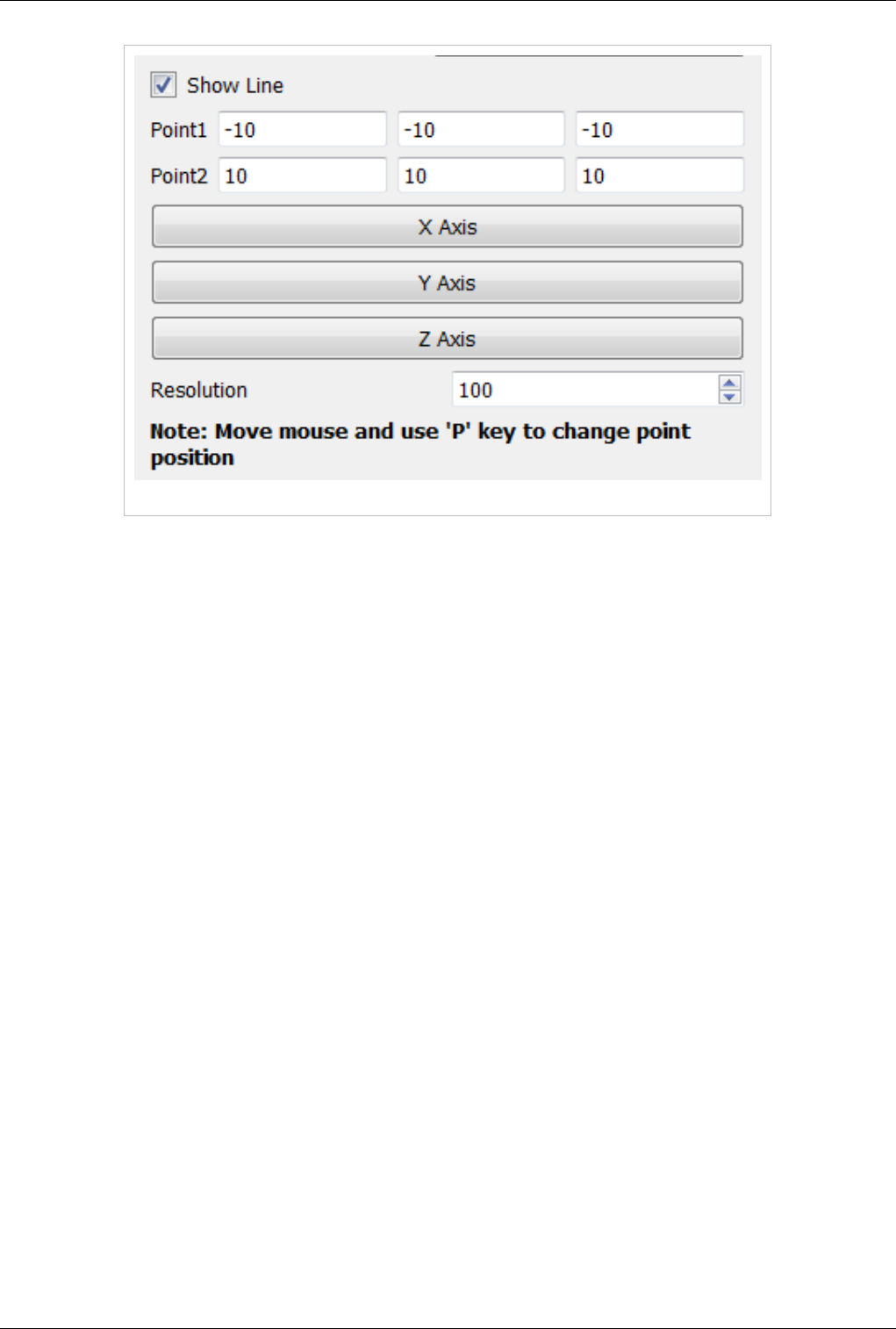

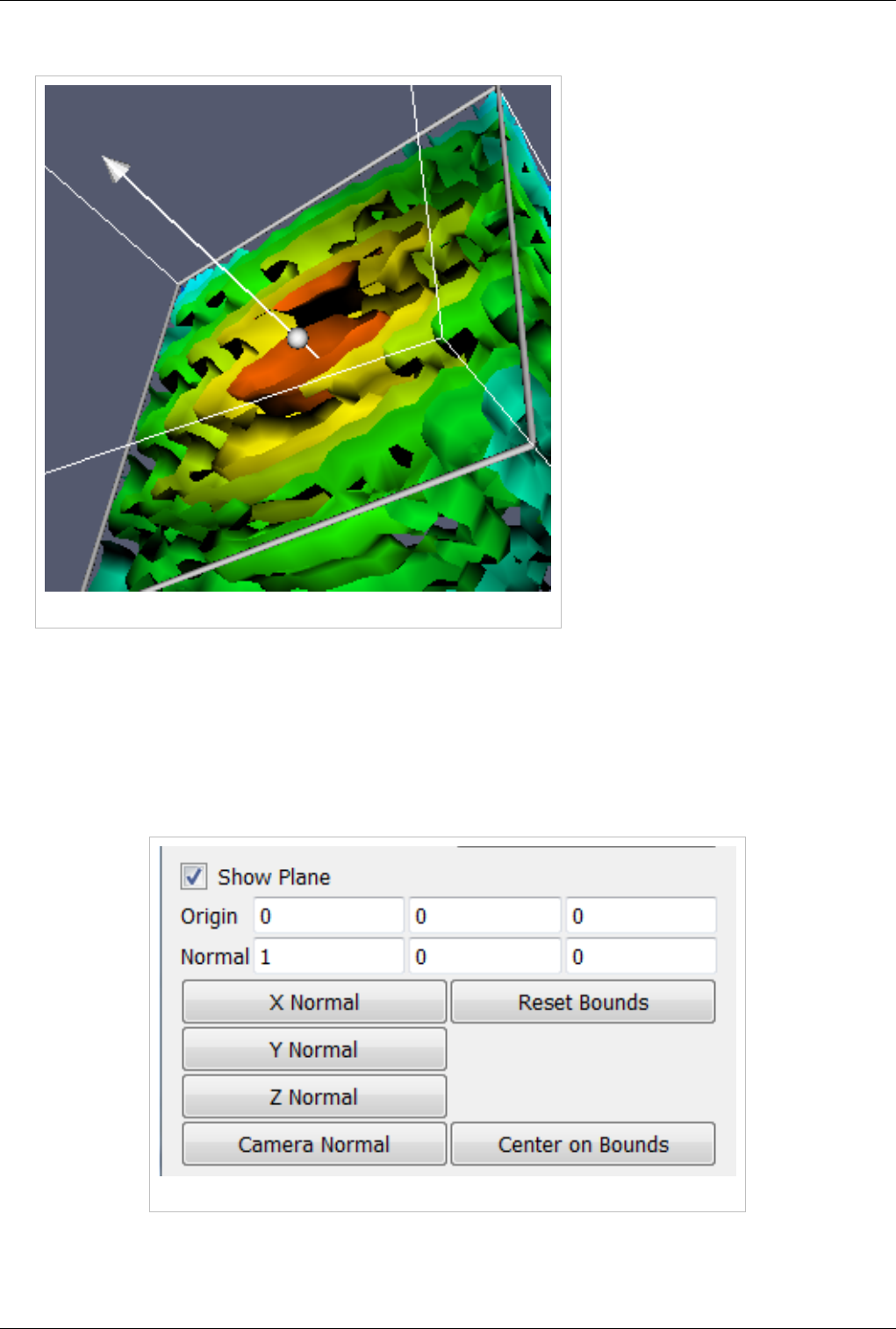

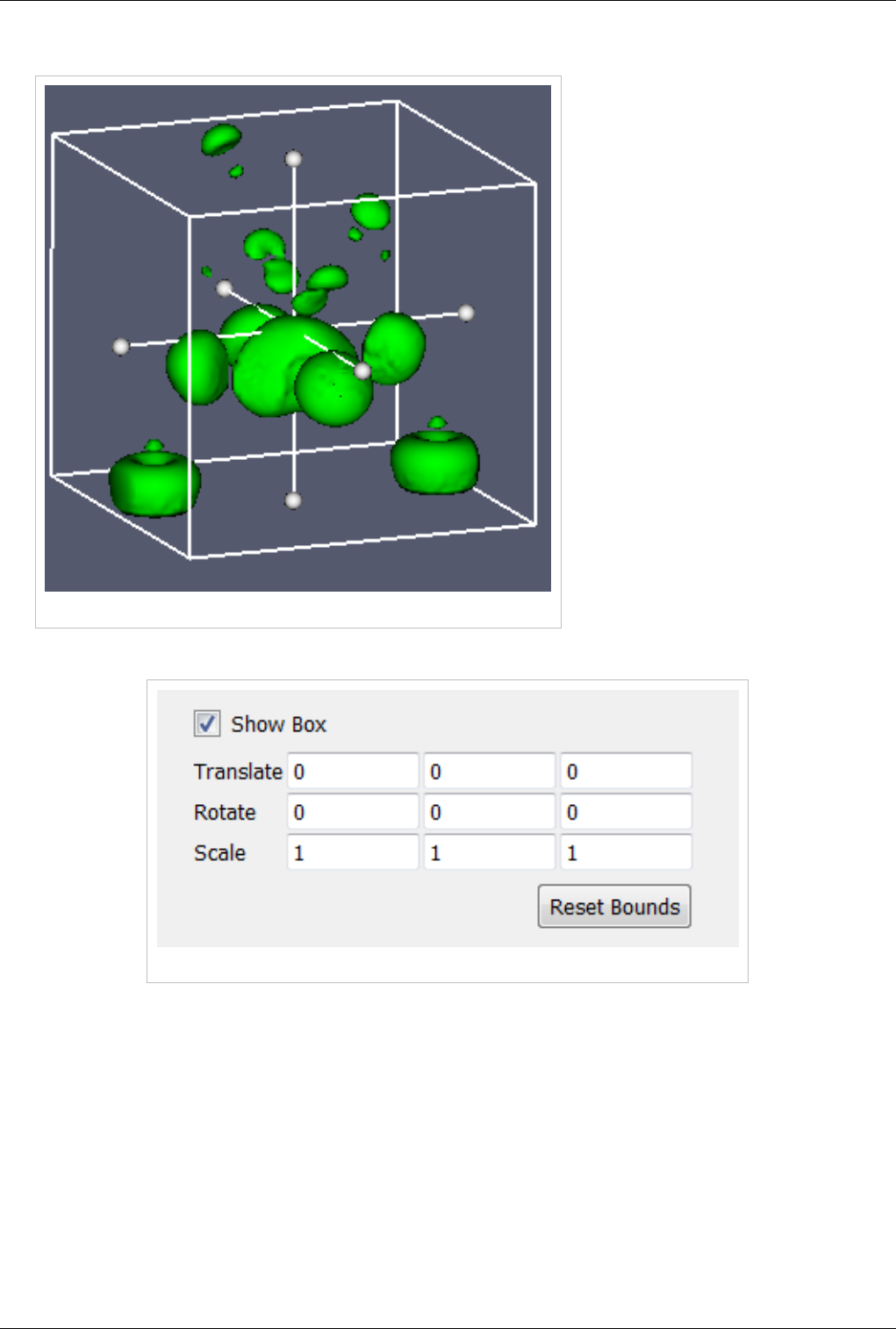

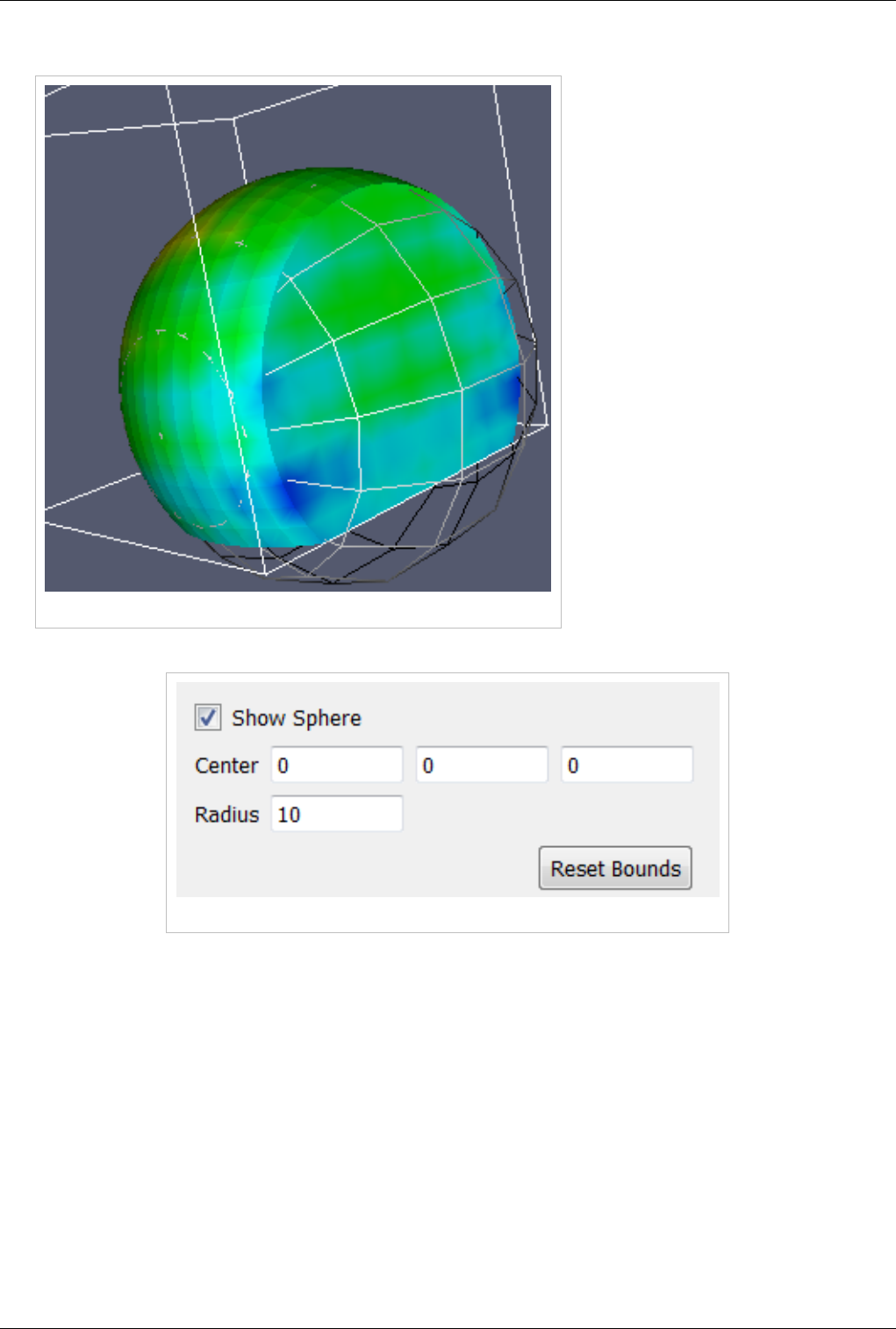

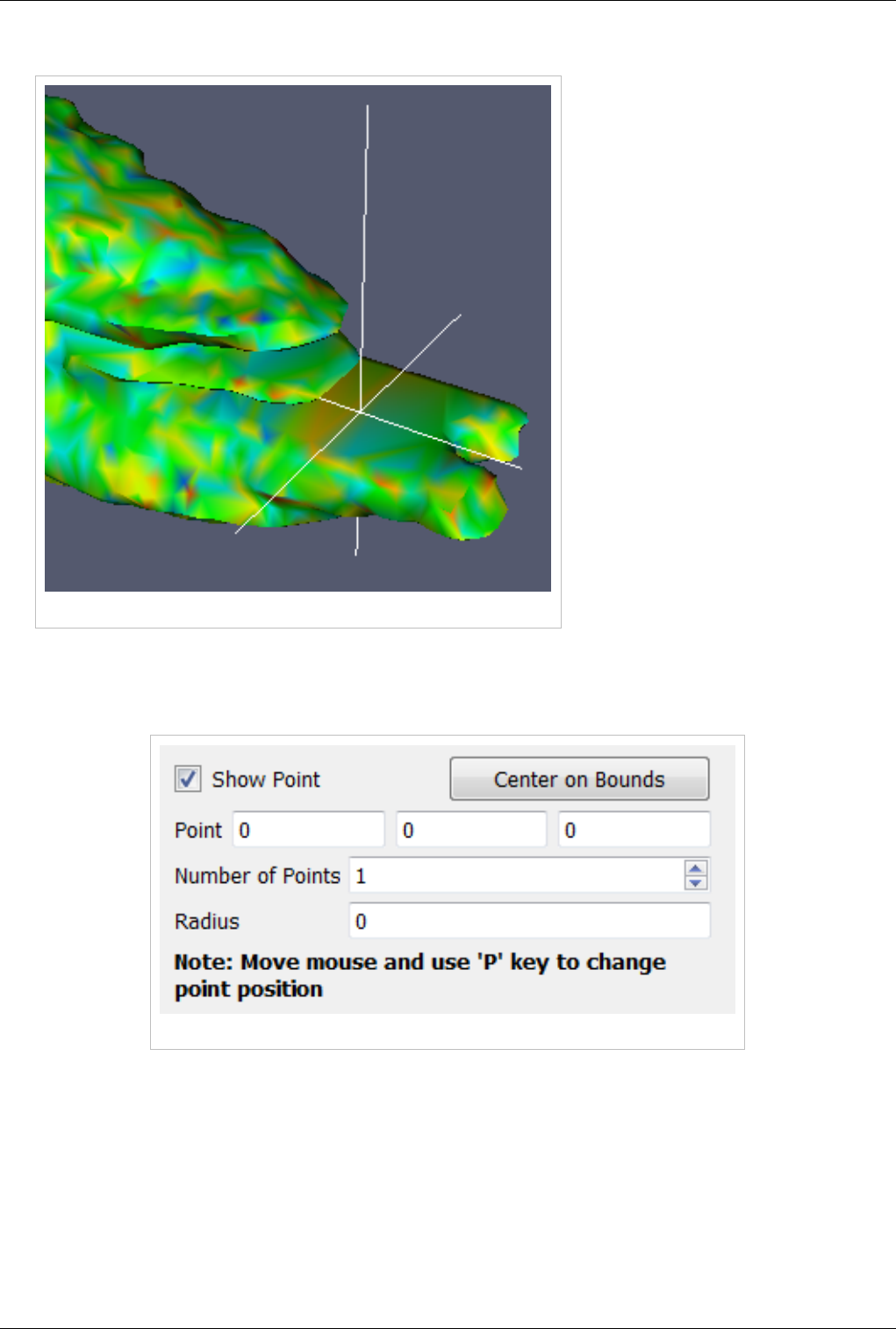

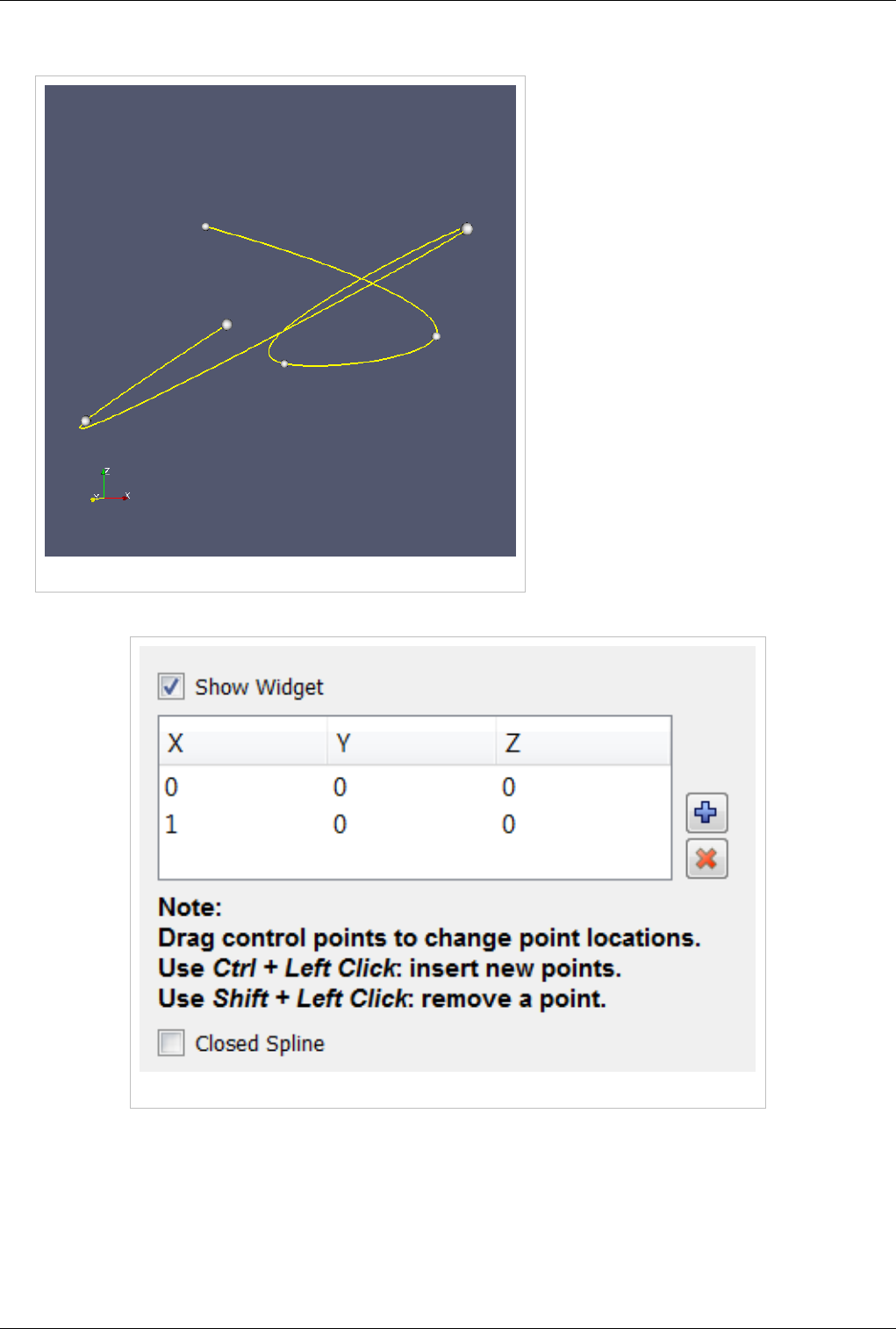

3D Widgets 123

Manipulating data in the 3D view 123

Annotation 130

Annotation 130

Animation 141

Animation View 141

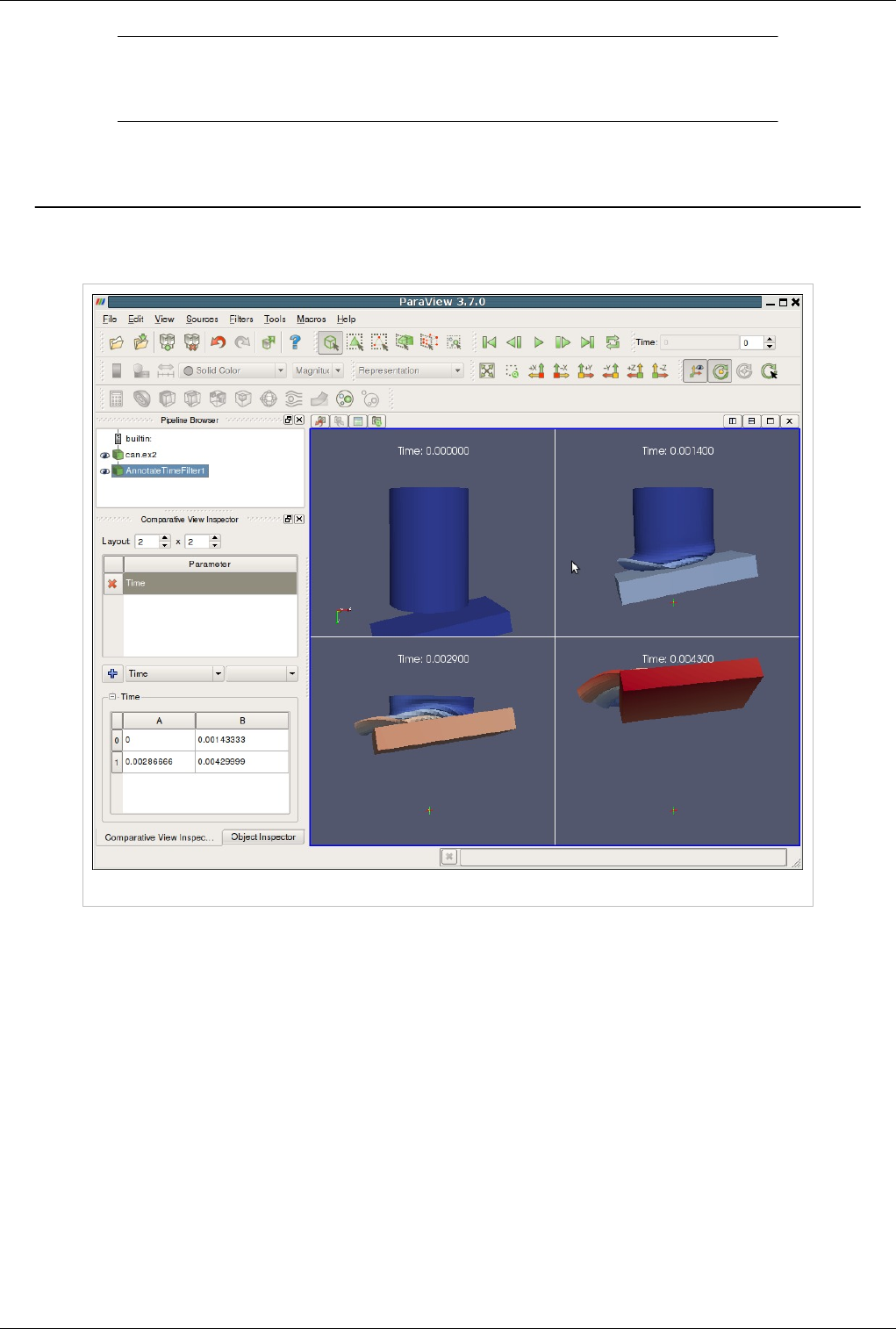

Comparative Visualization 149

Comparative Views 149

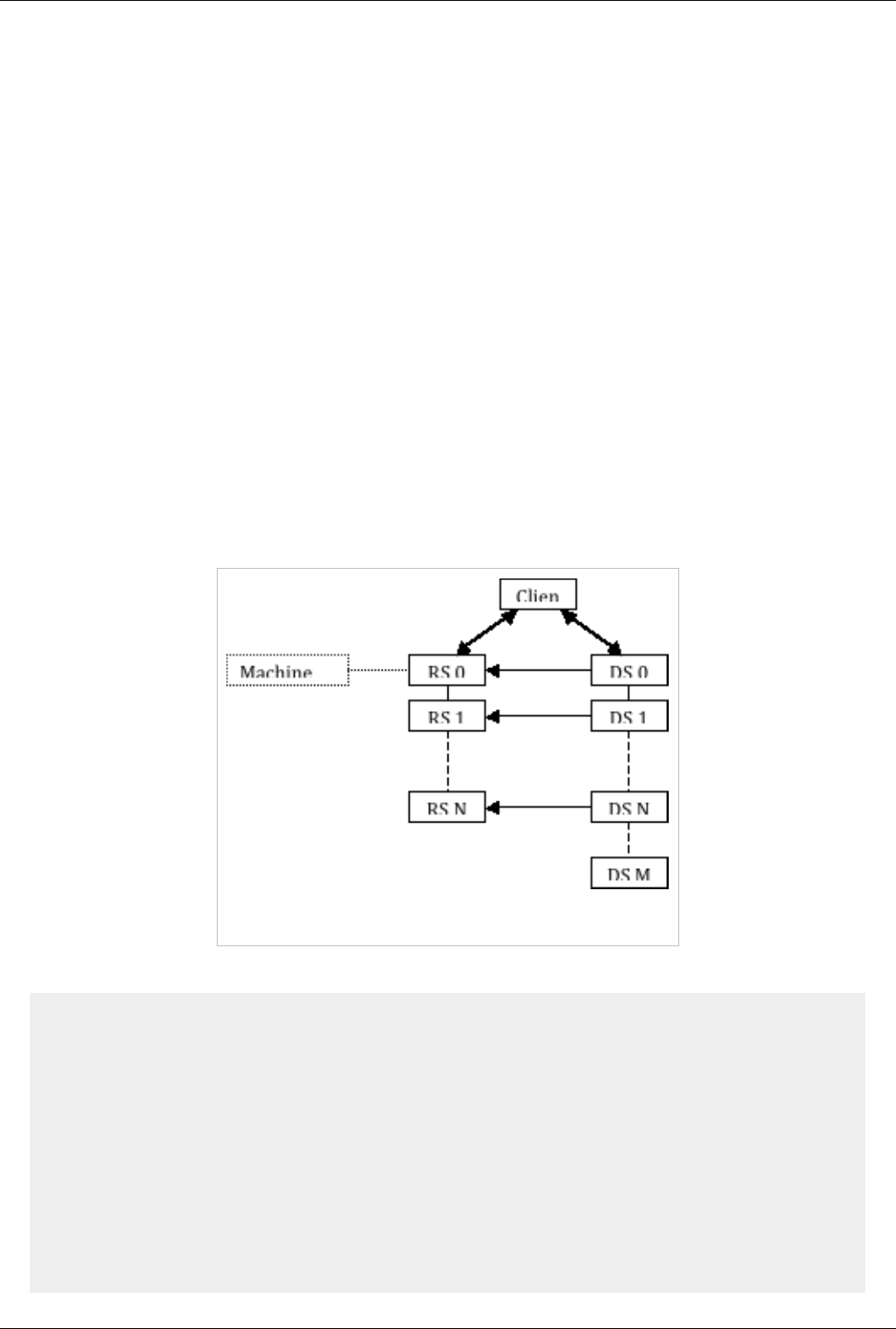

Remote and Parallel Large Data Visualization 156

Parallel ParaView 156

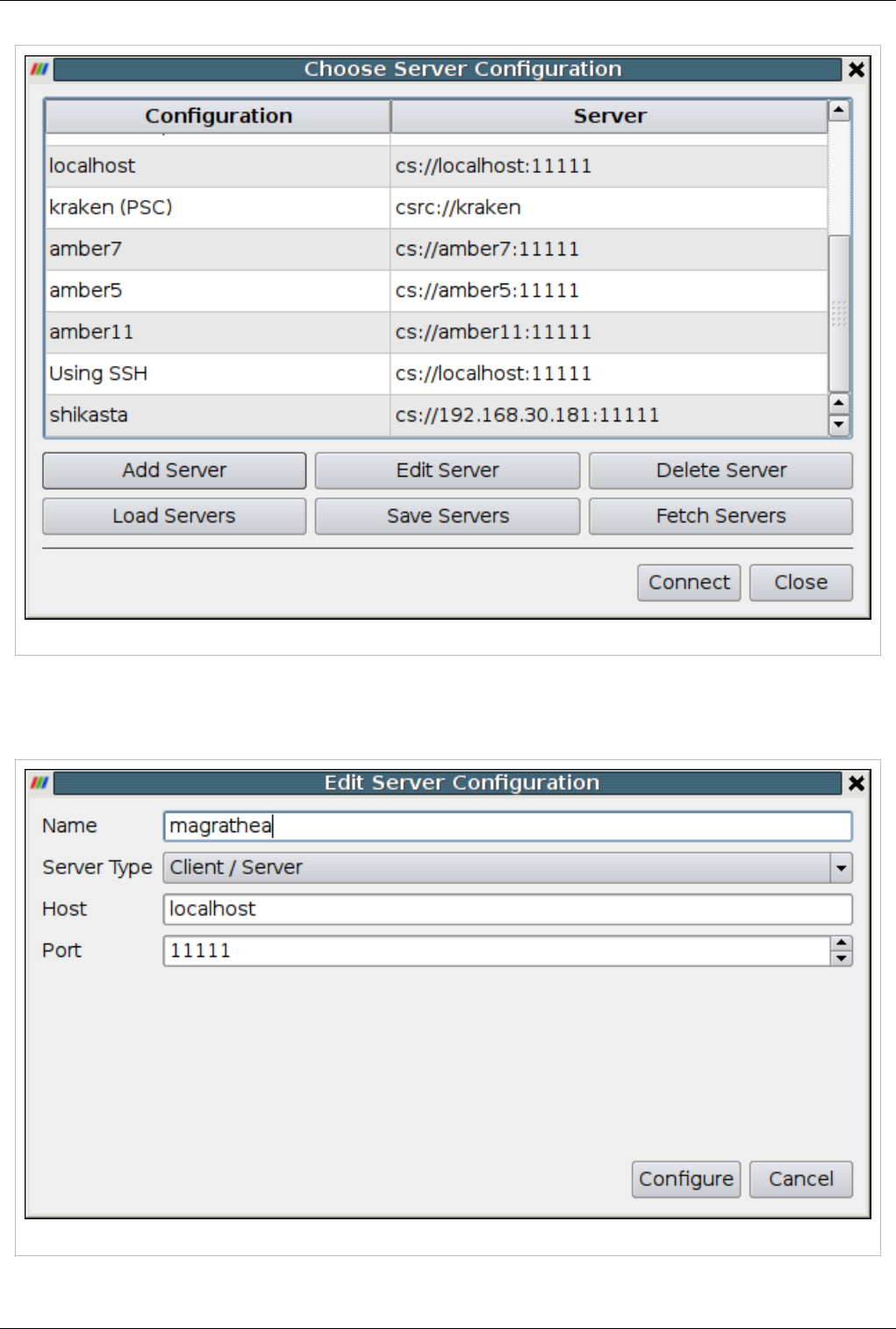

Starting the Server(s) 159

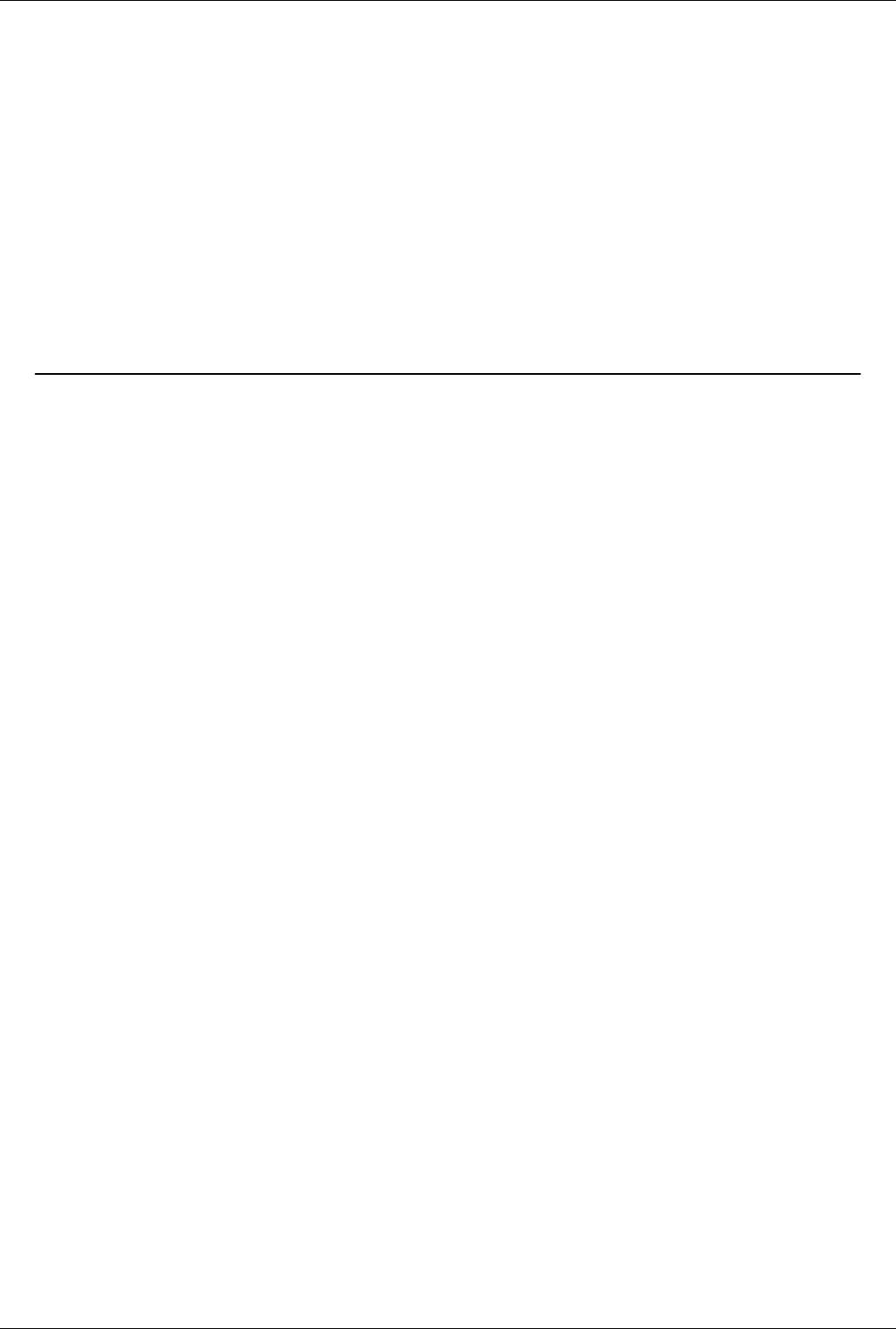

Connecting to the Server 164

Distributing/Obtaining Server Connection Configurations 168

Parallel Rendering and Large Displays 172

About Parallel Rendering 172

Parallel Rendering 172

Tile Display Walls 178

CAVE Displays 180

Scripted Control 188

Interpreted ParaView 188

Python Scripting 188

Tools for Python Scripting 210

Batch Processing 212

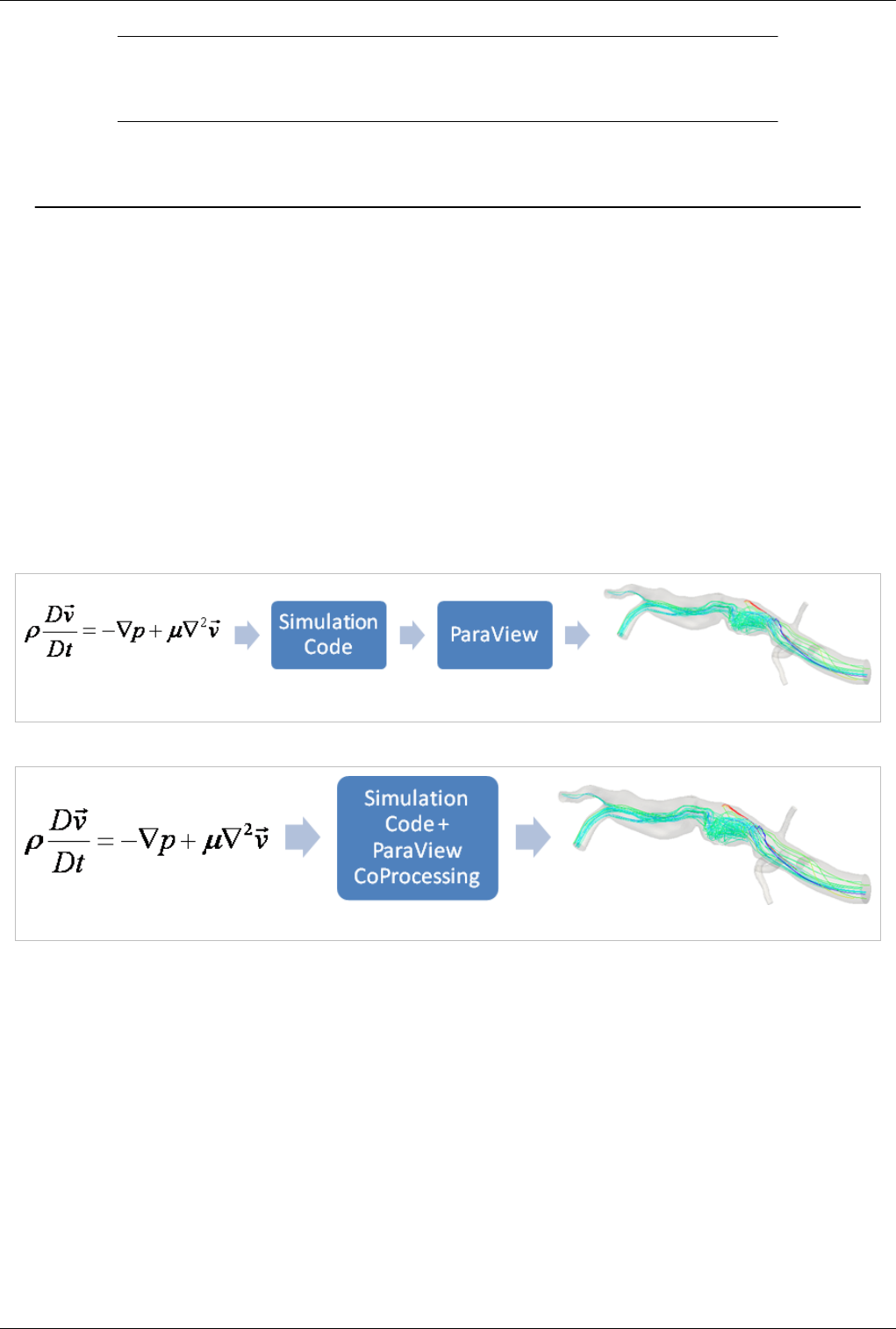

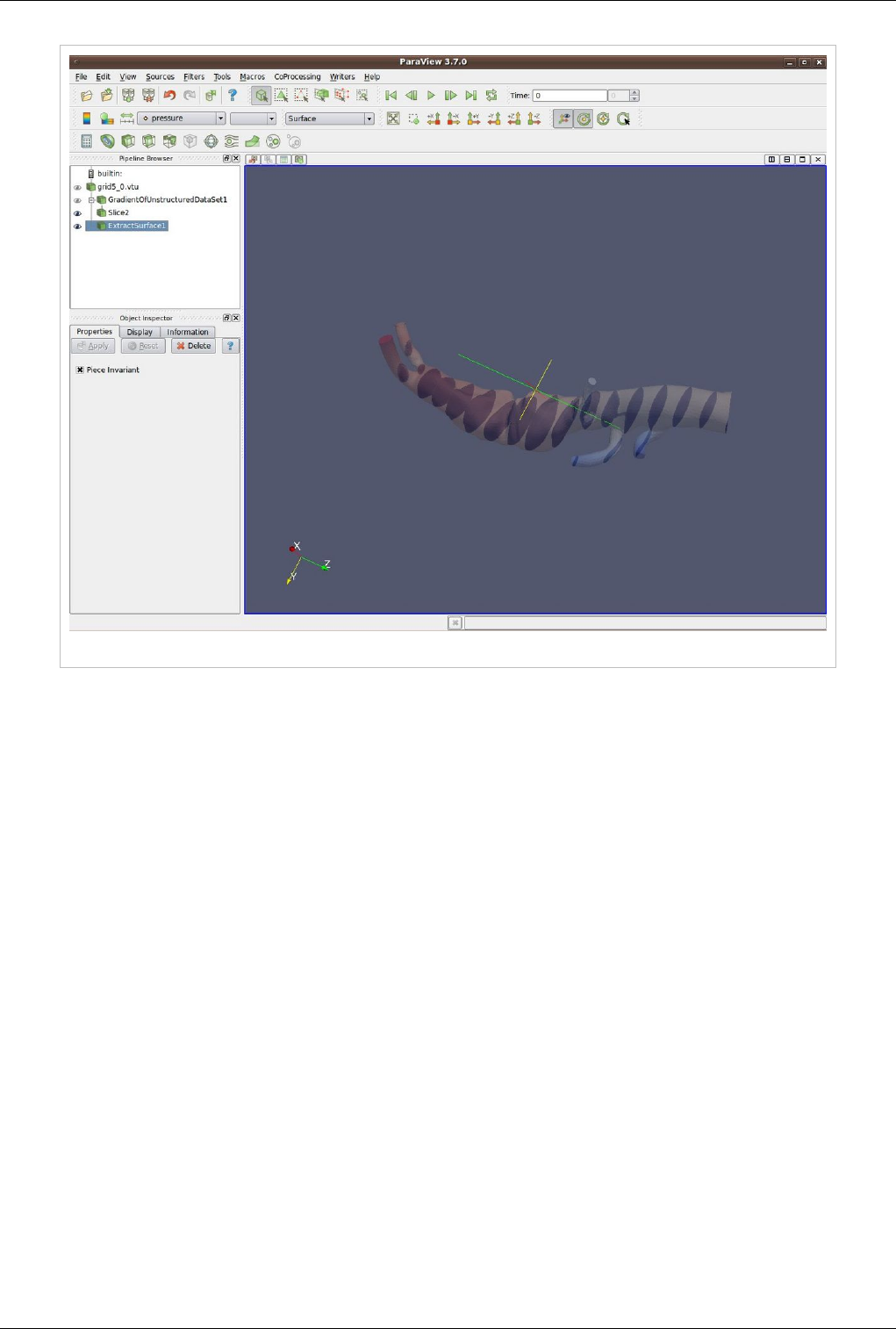

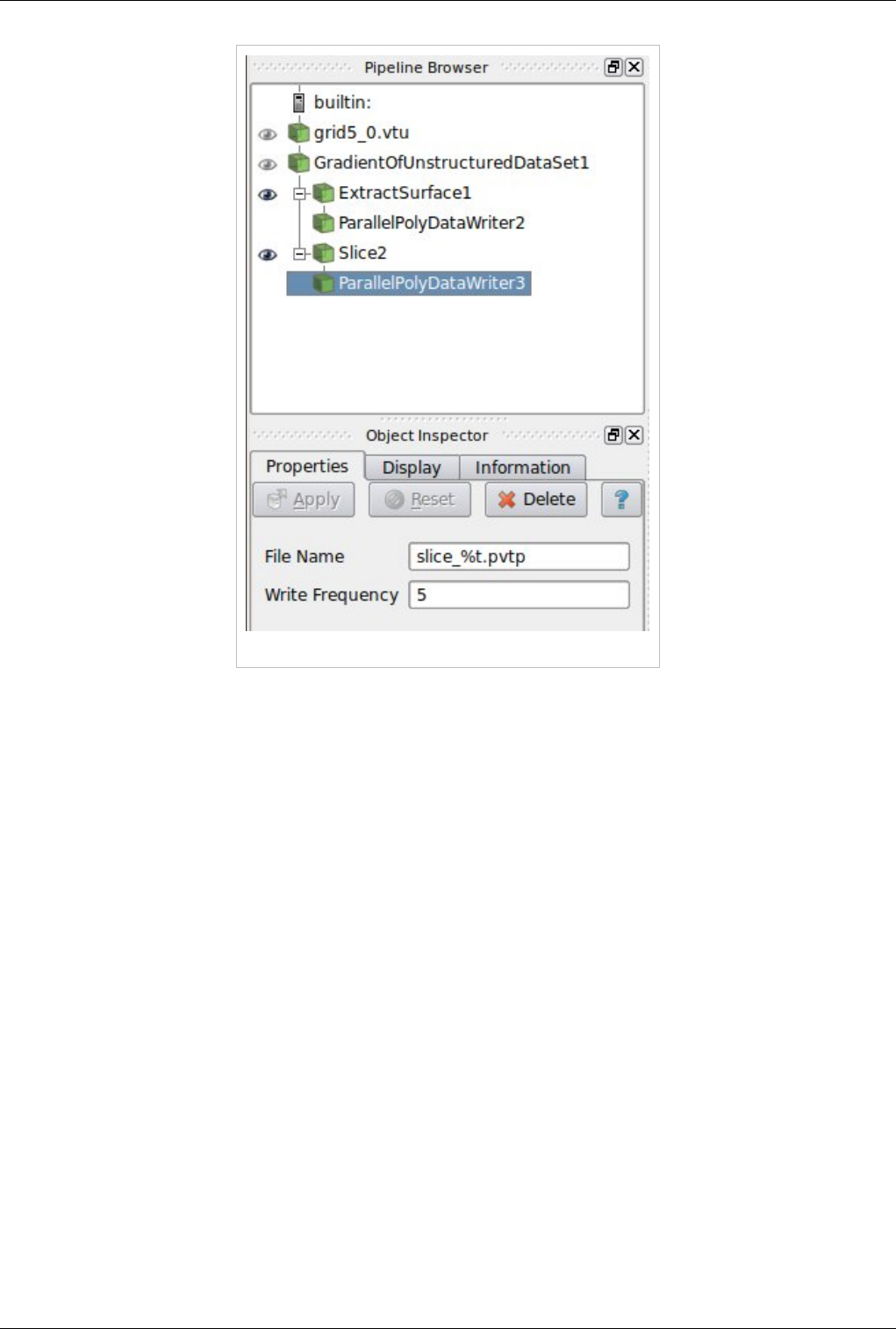

In-Situ/CoProcessing 217

CoProcessing 217

C++ CoProcessing example 229

Python CoProcessing Example 236

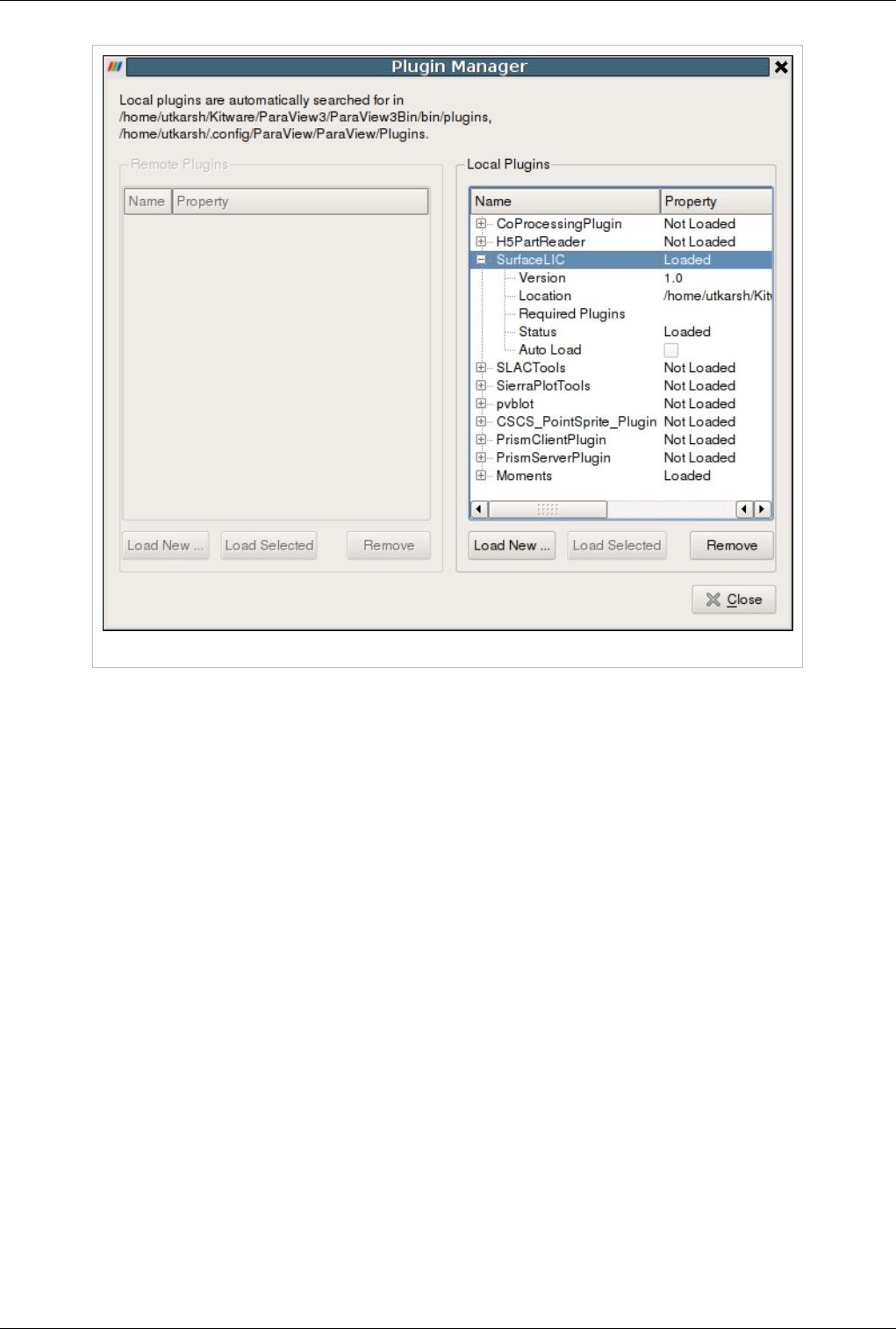

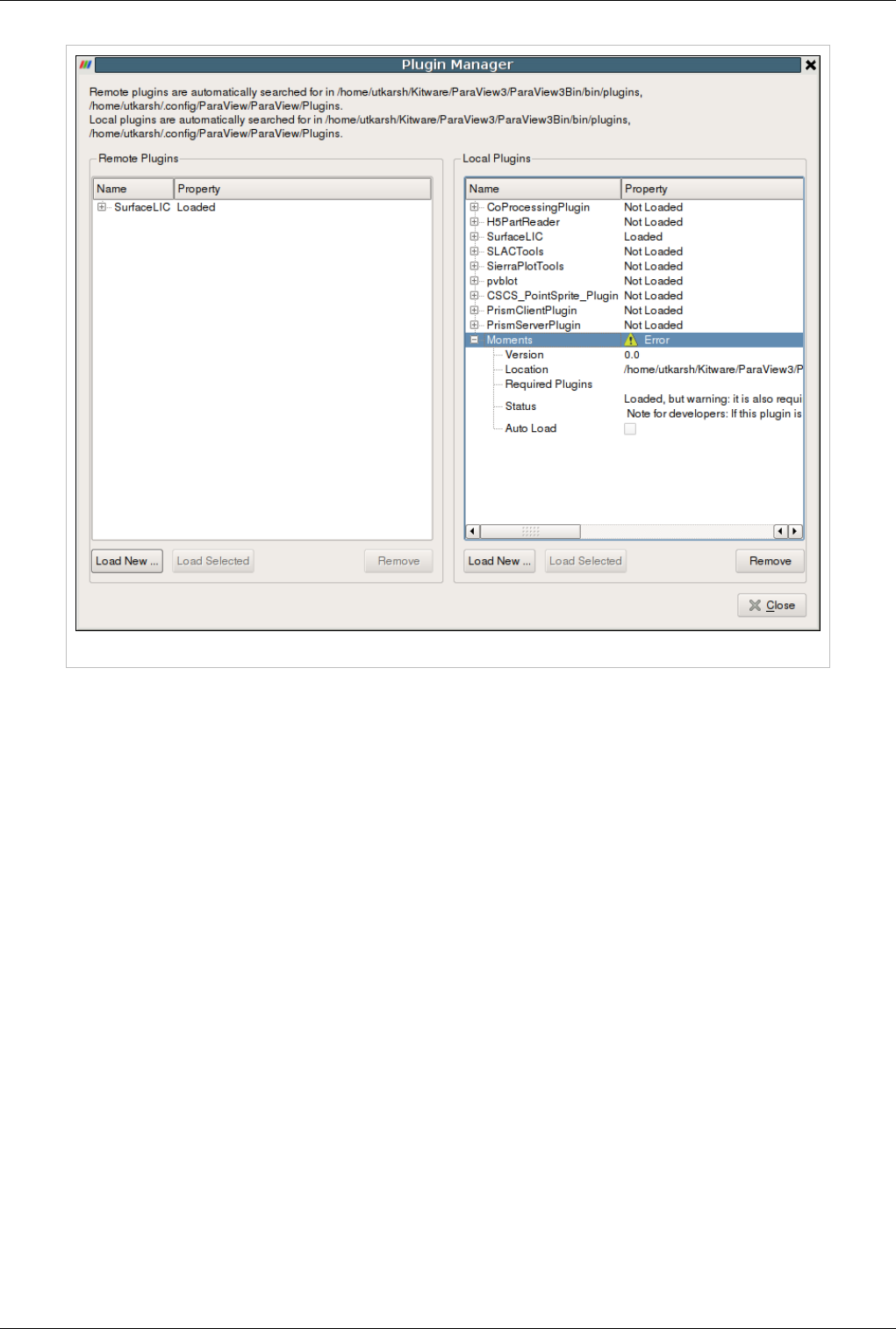

Plugins 242

What are Plugins? 242

Included Plugins 243

Loading Plugins 245

Appendix 248

Command Line Arguments 248

Application Settings 255

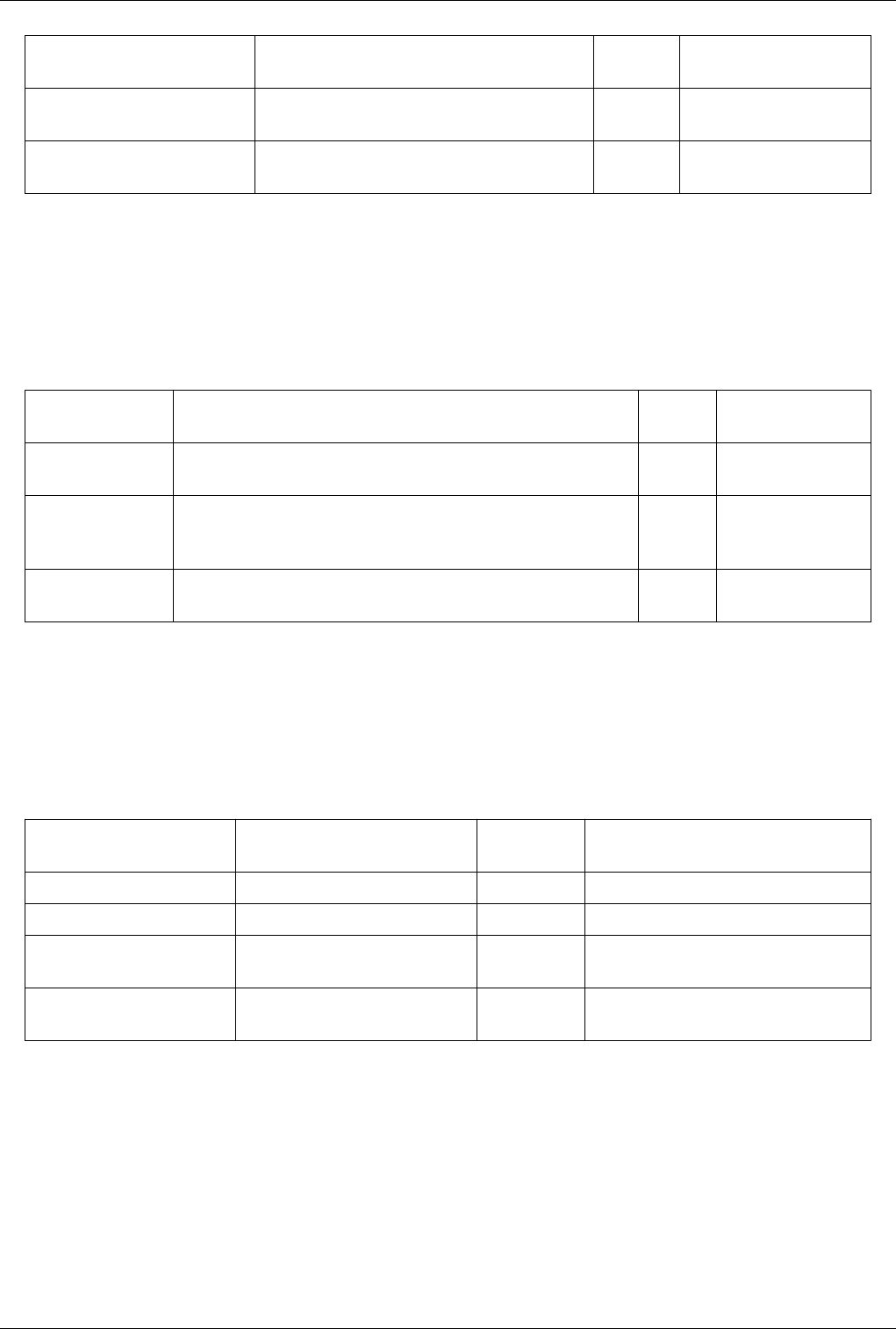

List of Readers 264

List of Sources 293

List of Filters 308

List of Writers 397

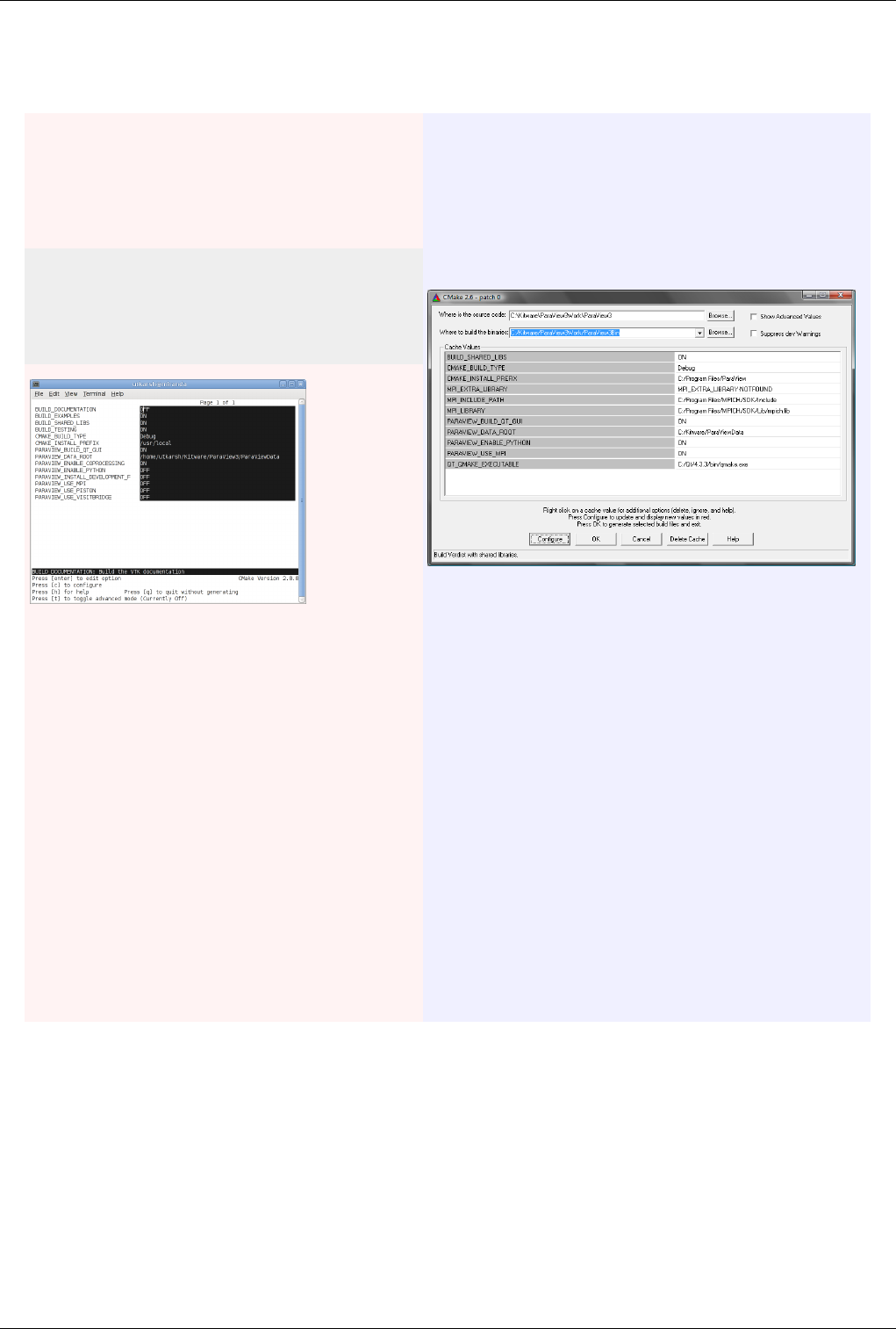

How to build/compile/install 405

Building ParaView with Mesa3D 414

How to write parallel VTK readers 416

References

Article Sources and Contributors 423

Image Sources, Licenses and Contributors 425

Article Licenses

License 429

1

Introduction

About Paraview

What is ParaView?

ParaView is an open-source, multi-platform application for the visualization and analysis of scientific datasets,

primarily those that are defined natively in a two- or three-dimensional space including those that extend into the

temporal dimension.

The front end graphical user interface (GUI) has an open, flexible and intuitive user interface that still gives you

fine-grained and open-ended control of the data manipulation and display processing needed to explore and present

complex data as you see fit.

ParaView has extensive scripting and batch processing capabilities. The standard scripting interface uses the widely

used python programming language for scripted control. As with the GUI, the python scripted control is easy to

learn, including the ability to record actions in the GUI and save them out as succinct human readable python

programs. It is also powerful, with the ability to write scripted filters that run on the server that have access to every

bit of your data on a large parallel machine.

ParaView's data processing and rendering components are built upon a modular and scalable distributed-memory

parallel architecture in which many processors operate synchronously on different portions of the data. ParaView's

scalable architecture allows you to run ParaView directly on anything from a small netbook class machine up to the

world's largest supercomputer. However, the size of the datasets ParaView can handle in practice varies widely

depending on the size of the machine that ParaView's server components are run on. Thus people frequently do both,

taking advantage of ParaView's client/server architecture to connect to and control the supercomputer from the

netbook.

ParaView is meant to be easily extended and customized into new applications and be used by or make use of other

tools. Correspondingly there are a number of different interfaces to ParaView's data processing and visualization

engine, for example the web-based ParaViewWeb [1]. This book does not focus on these nor does it describe in great

detail the programmers' interface to the ParaView engine. The book instead focuses on understanding the standard

ParaView GUI based application.

About Paraview 2

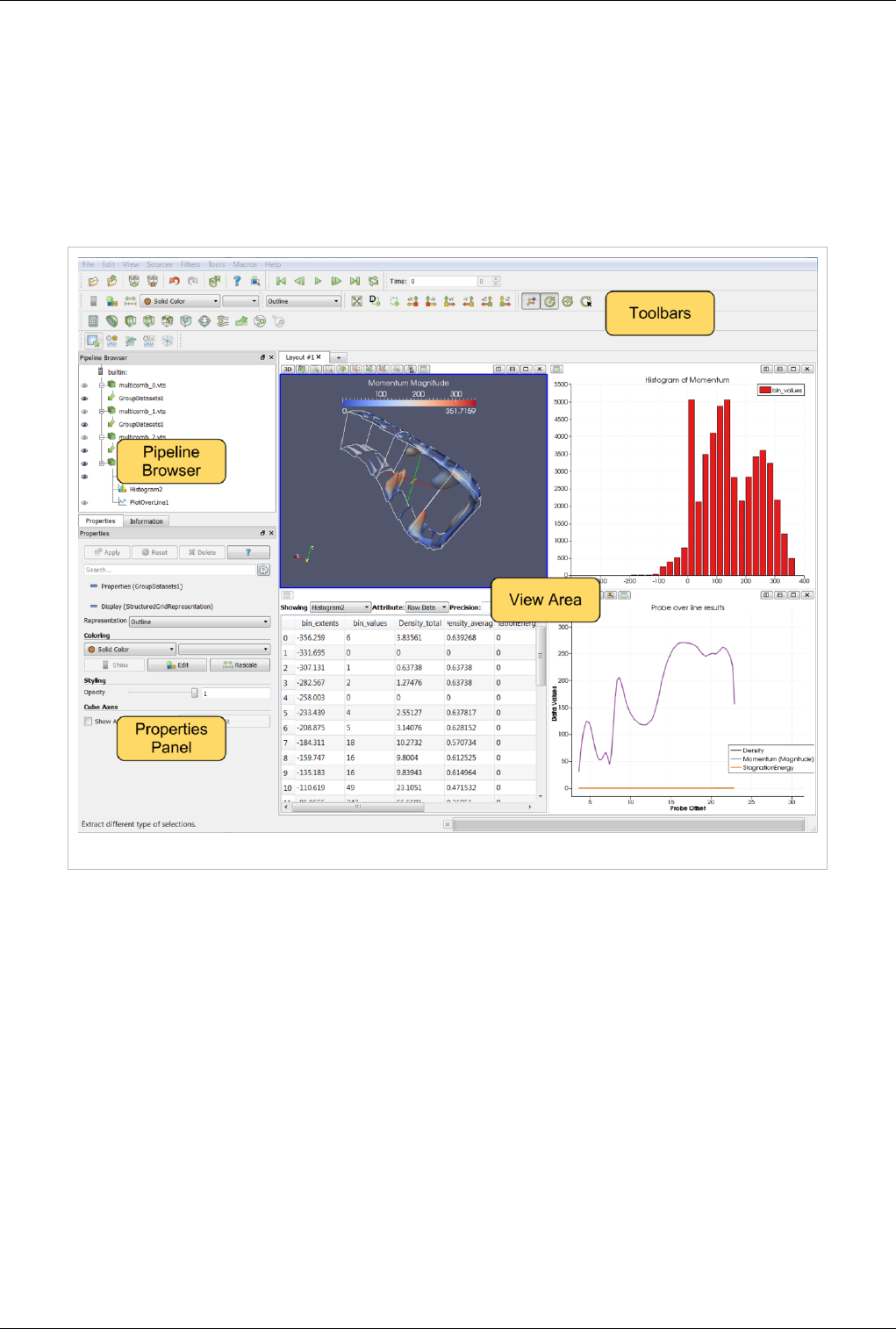

User Interface

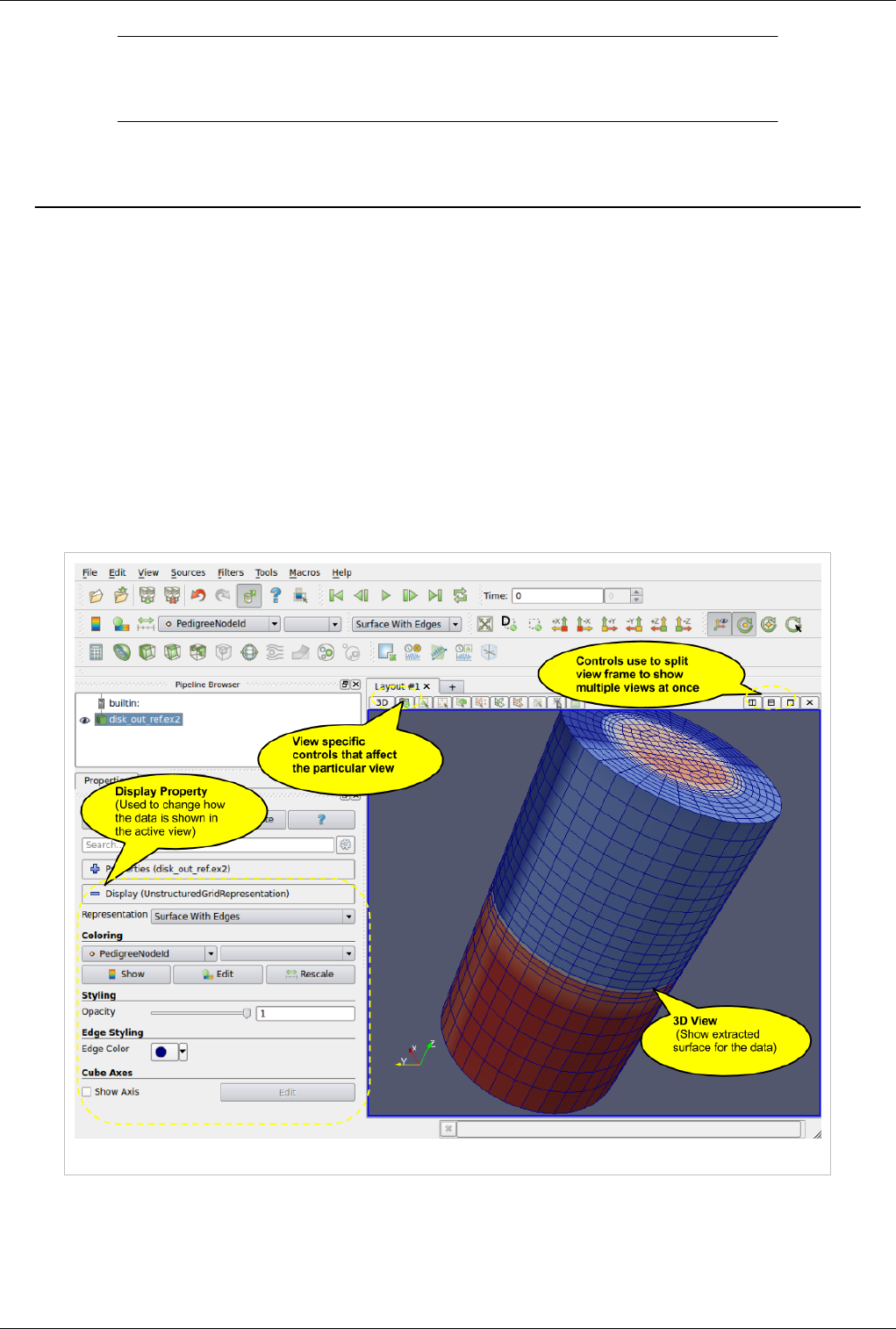

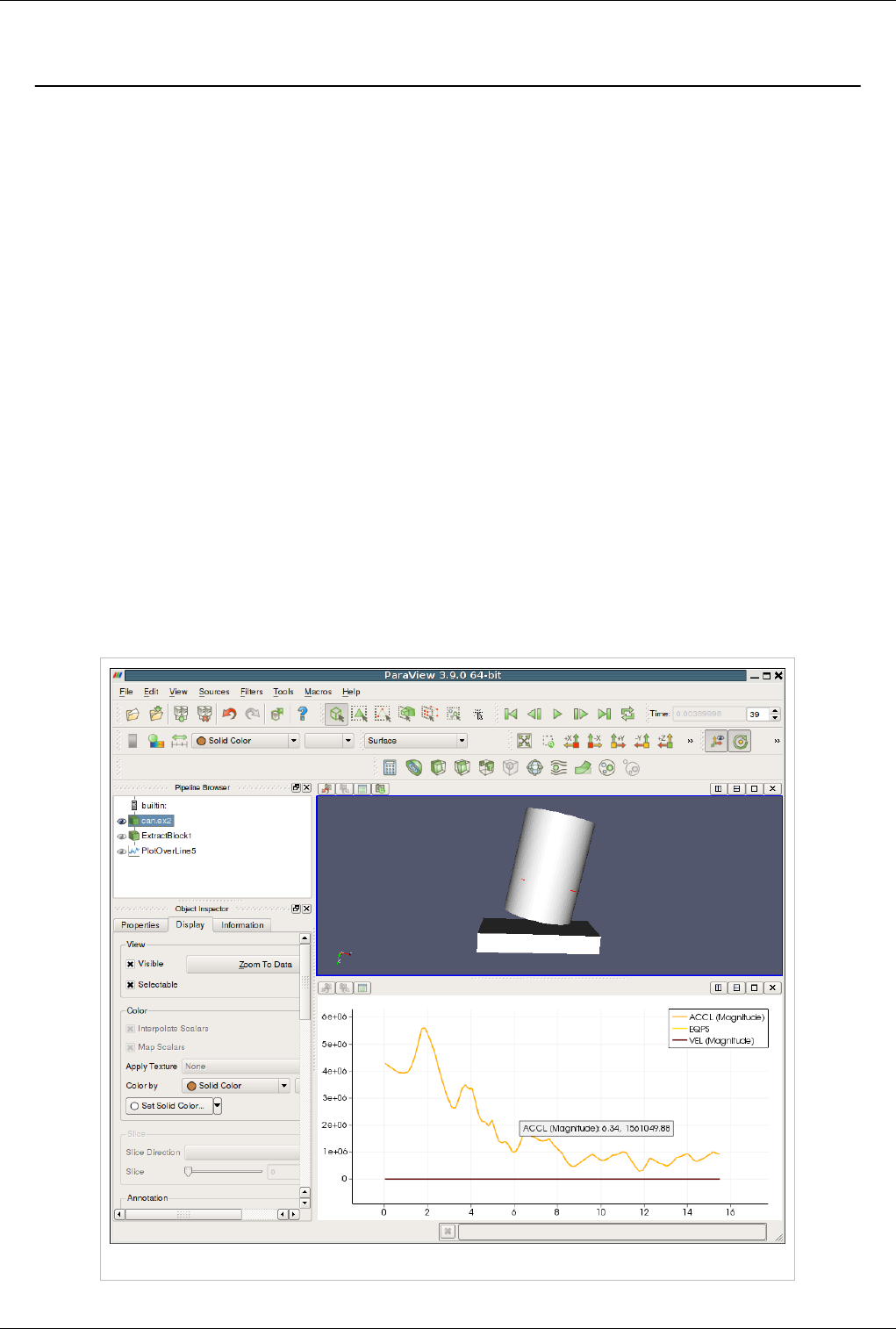

The different sections of ParaView's Graphical User Interface (GUI) are shown below. Of particular importance in

the following discussion are the:

• File and Filter menus which allow you to open files and manipulate data

• Pipeline Browser which displays the visualization pipeline

• Properties panel where you can can control any given module within the pipeline

• View area where data is displayed in one or more windows

Figure 1.1 ParaView GUI Overview

Modality

One very important thing to keep in mind when using ParaView is that the GUI is very modal. At any given time you

will have one "active" module within the visualization pipeline, one "active" view, and one "active" selection. For

example, when you click on the name of a reader or source within the Pipeline Browser, it becomes the active

module and the properties of that filter are displayed in the Properties panel. Likewise when you click within a

different view, that view becomes the active view and the visibility "eye" icons in the Pipeline Browser are changed

to show what filters are displayed within this View. These concepts will be described in detail in later chapters

(Multiple Views [2],Pipeline Basics [3],Selection [4]). For now you should be aware that the information displayed in

the GUI always pertains to these active entities.

About Paraview 3

Features

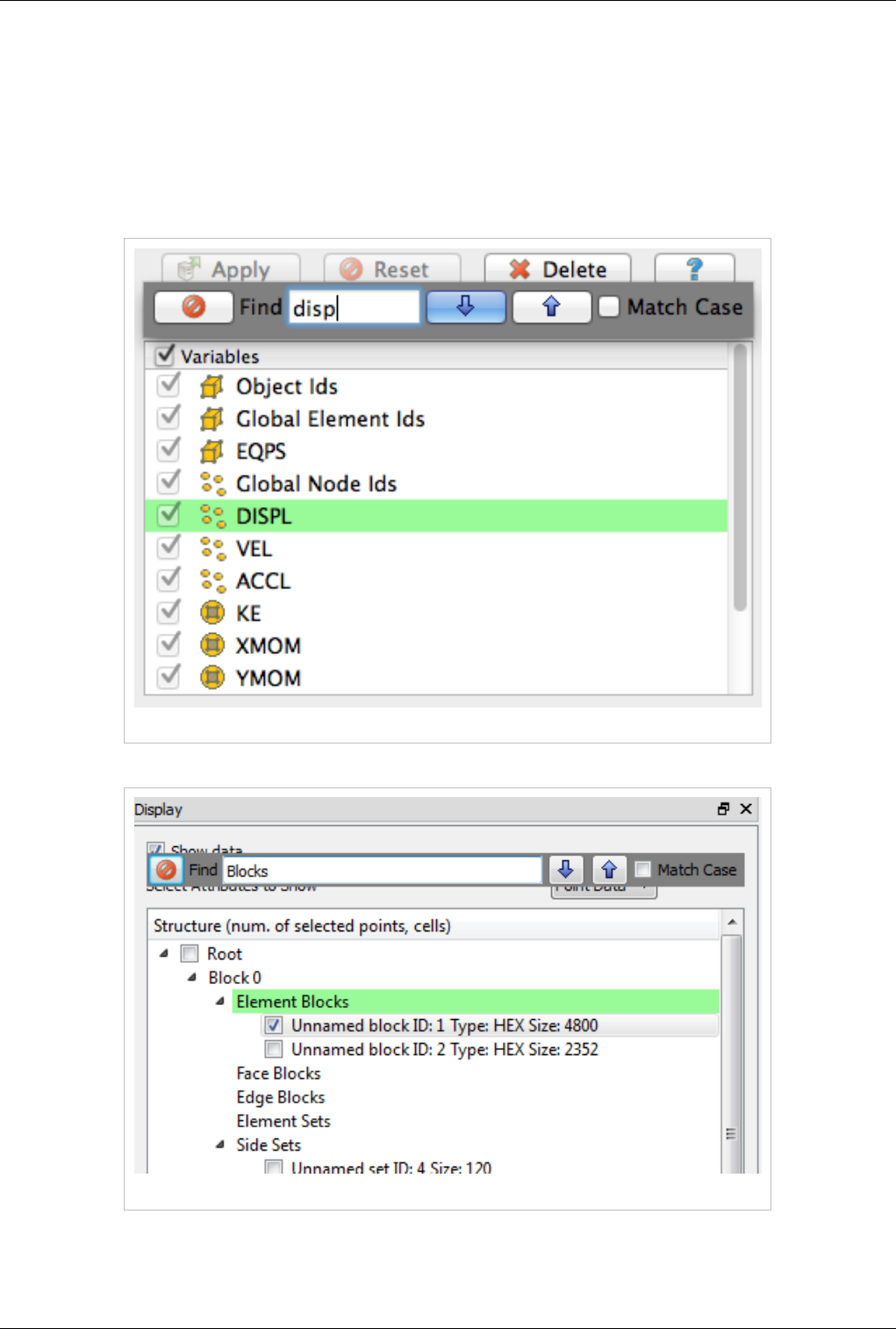

Modern graphical applications allow users to treat the GUI as a document where informations can be queried and

used by Copy/Paste from one place to another and that's precisely where we are heading to with ParaView. Typically

user can query any Tree/Table/List view widget in the UI by activating that component and by hitting the Ctrl+F or

Command+F on Mac keyboard shortcut, while the view widget is in focus. This will enable a dynamic widget

showing up which get illustrated in the following screenshot. This search-widget will be closed when the view

widget lost focus, or the Esc button is pressed, or the Close button on the search-widget is clicked.

Figure 1.2 Searching in lists

Figure 1.3 Searching in trees

In order to retrieve data from spreadsheet or complex UI, you will need to double click on the area that you are

interested in and select the portion of text that you want to select to Copy. The set of screenshots below illustrate

About Paraview 5

Figure 1.7 Copying values from Information Tab

Basics of Visualization

Put simply, the process of visualization is taking raw data and converting it to a form that is viewable and

understandable to humans. This enables a better cognitive understanding of our data. Scientific visualization is

specifically concerned with the type of data that has a well-defined representation in 2D or 3D space. Data that

comes from simulation meshes and scanner data is well suited for this type of analysis.

There are three basic steps to visualizing your data: reading, filtering, and rendering. First, your data must be read

into ParaView. Next, you may apply any number of filters that process the data to generate, extract, or derive

features from the data. Finally, a viewable image is rendered from the data and you can then change the viewing

parameters or rendering modality for best the visual effect.

The Pipeline Concept

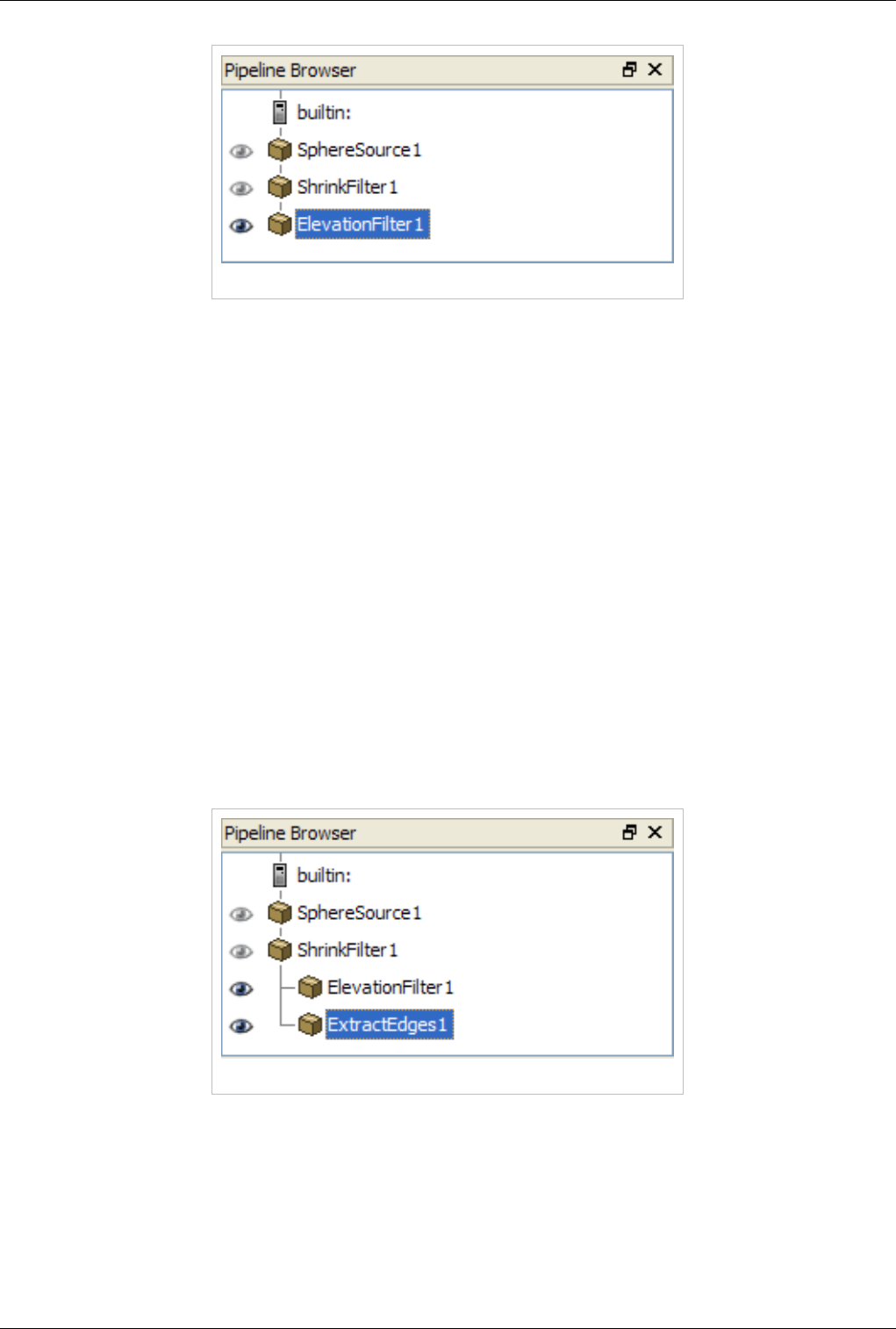

In ParaView, these steps are made manifest in a visualization pipeline. That is, you visualize data by building up a

set of modules, each of which takes in some data, operates on it, and presents the result as a new dataset. This begins

with a reader module that ingests data off of files on disk.

Reading data into ParaView is often as simple as selecting Open from the File menu, and then clicking the glowing

Accept button on the Properties panel. ParaView comes with support for a large number of file formats [5], and its

modular architecture makes it possible to add new file readers [6].

Once a file is read, ParaView automatically renders it in a view. In ParaView, a view is simply a window that shows

data. There are different types of views, ranging from qualitative computer graphics rendering of the data to

quantitative spreadsheet presentations of the data values as text. ParaView picks a suitable view type for your data

automatically, but you are free to change the view type, modify the rendering parameters of the data in the view, and

even create new views simultaneously as you see fit to better understand what you have read in. Additionally,

high-level meta information about the data including names, types and ranges of arrays, temporal ranges, memory

size and geometric extent can be found in the Information tab.

You can learn a great deal about a given dataset with a one element visualization pipeline consisting of just a reader

module. In ParaView, you can create arbitrarily complex visualization pipelines, including ones with multiple

readers, merging and branching pipelines. You build up a pipeline by choosing the next filter in the sequence from

the Filters menu. Once you click Accept, this new filter will read in the data produced by the formerly active filter

and perform some processing on that data. The new filter then becomes the active one. Filters then are created

differently from but operate in the same manner as readers. At all points you use the Pipeline Inspector to choose the

active filter and then the Properties panel to configure it.

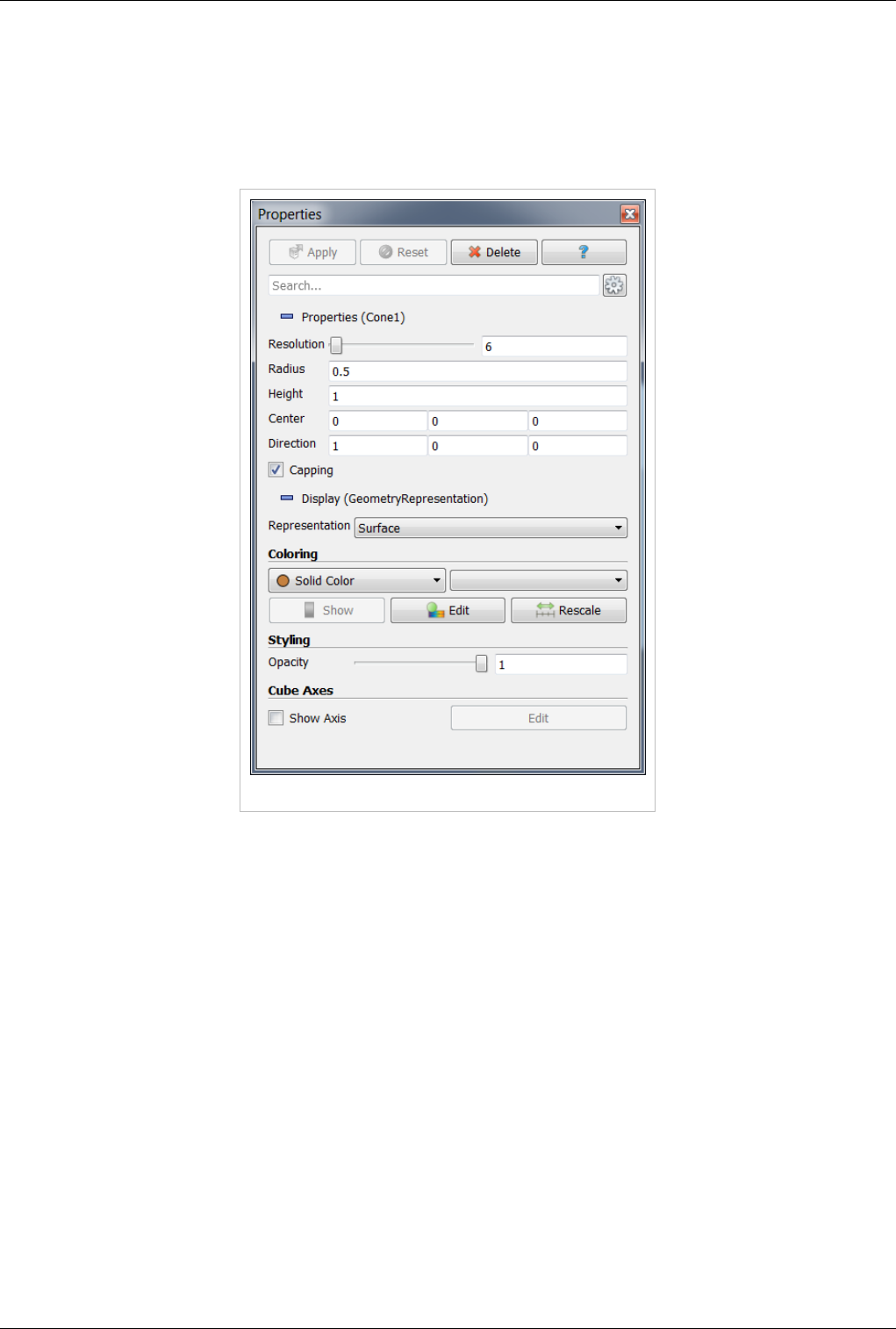

The Pipeline Browser is where the overall visualization pipeline is displayed and controlled from. The Properties

panel is where the specific parameters of one particular module within the pipeline are displayed and controlled

from. The Properties panel has two sections: Properties section presents the parameters for the processing done

within that module, Display section presents the parameters of how the output of that module will be displayed in a

view (namely, the active view). There's also in Information panel, which presents the meta information about the

About Paraview 6

data produced by the module as described above.

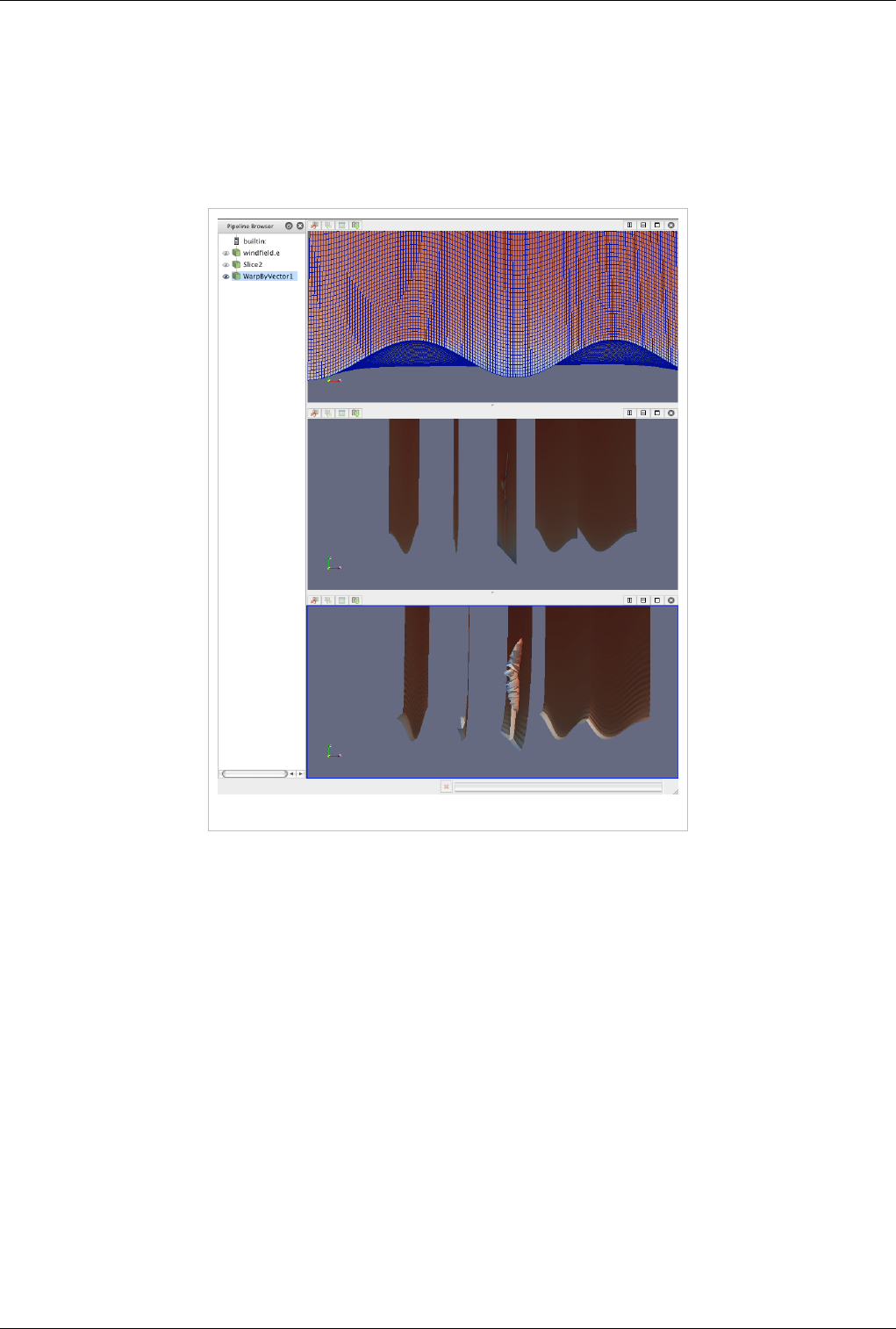

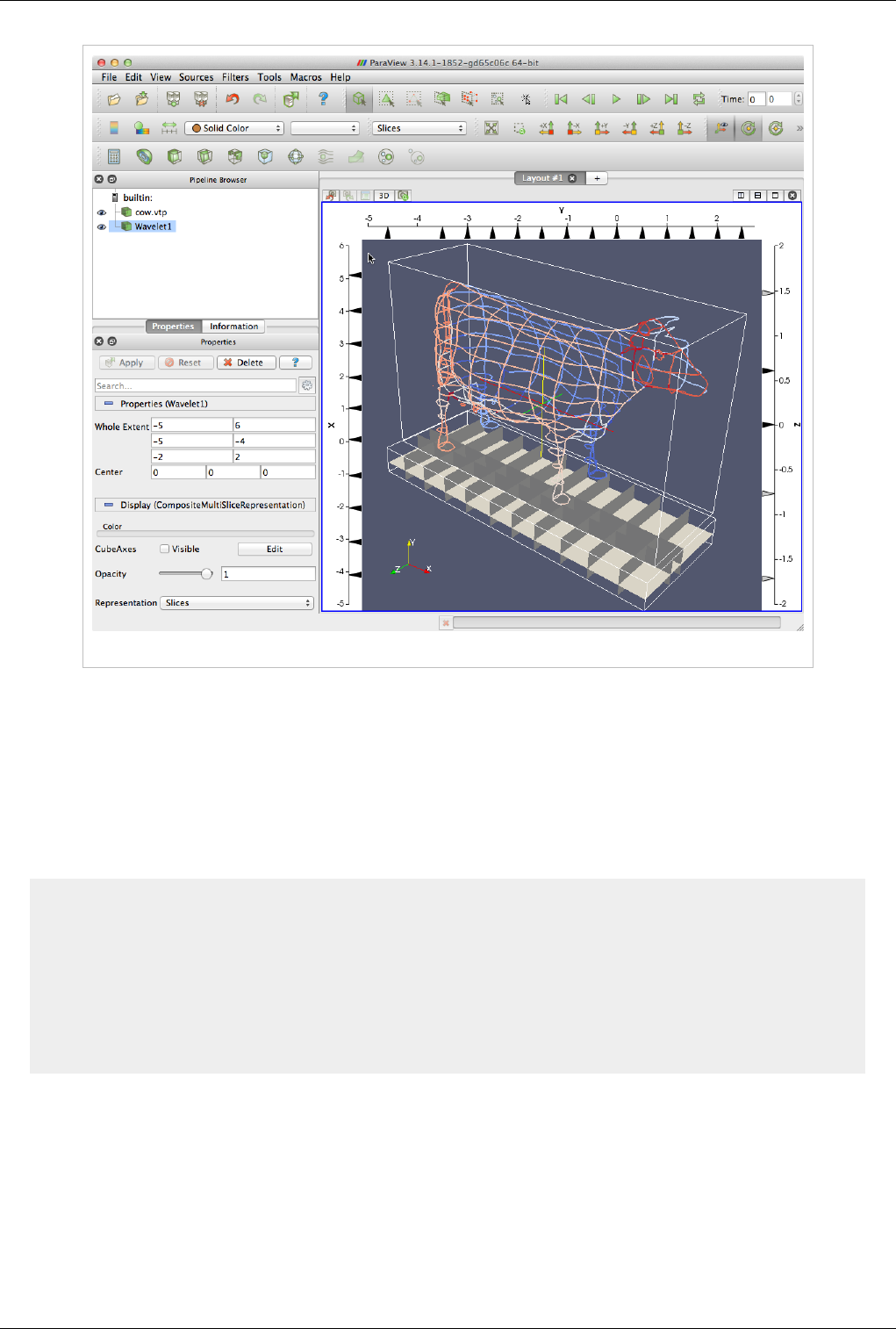

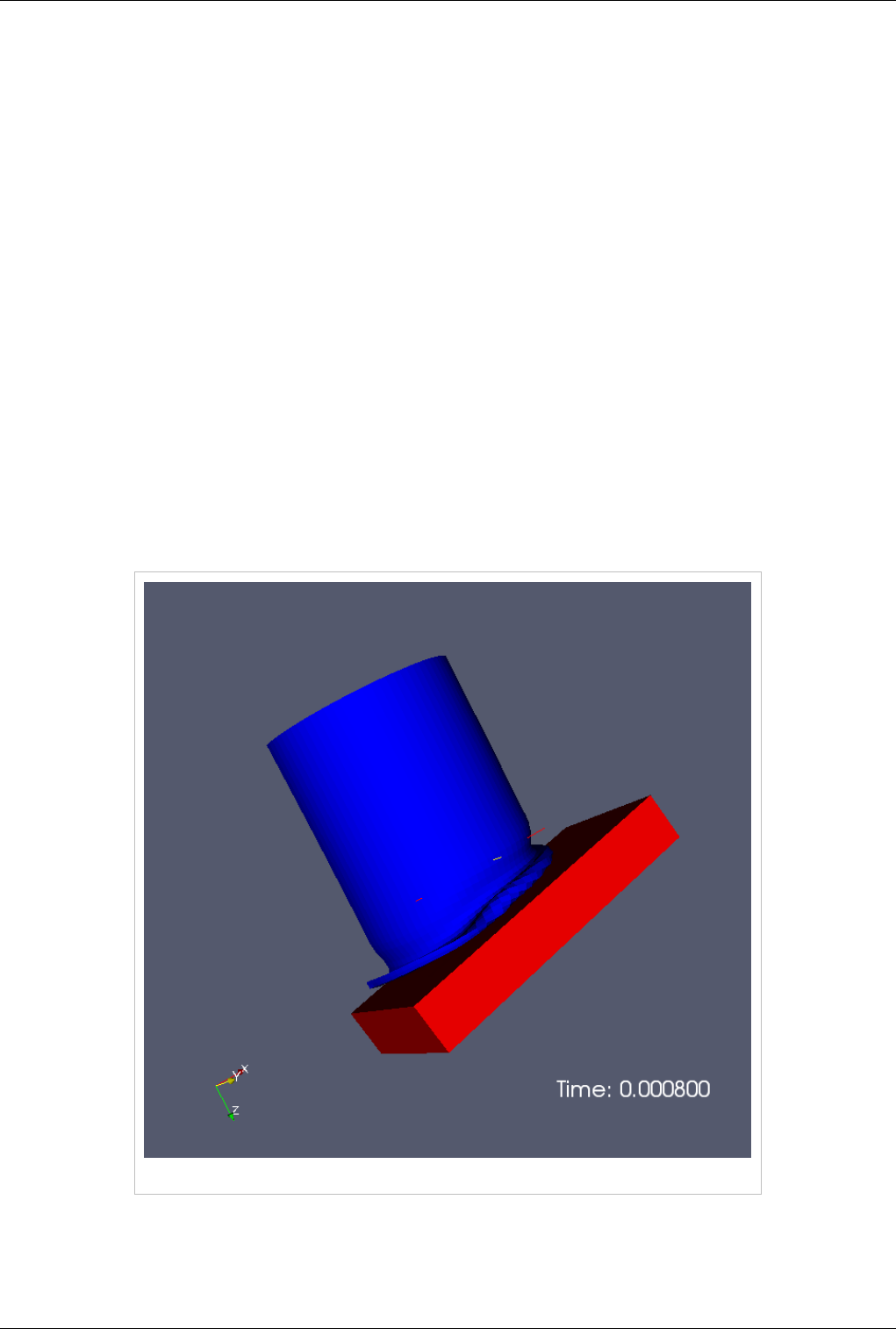

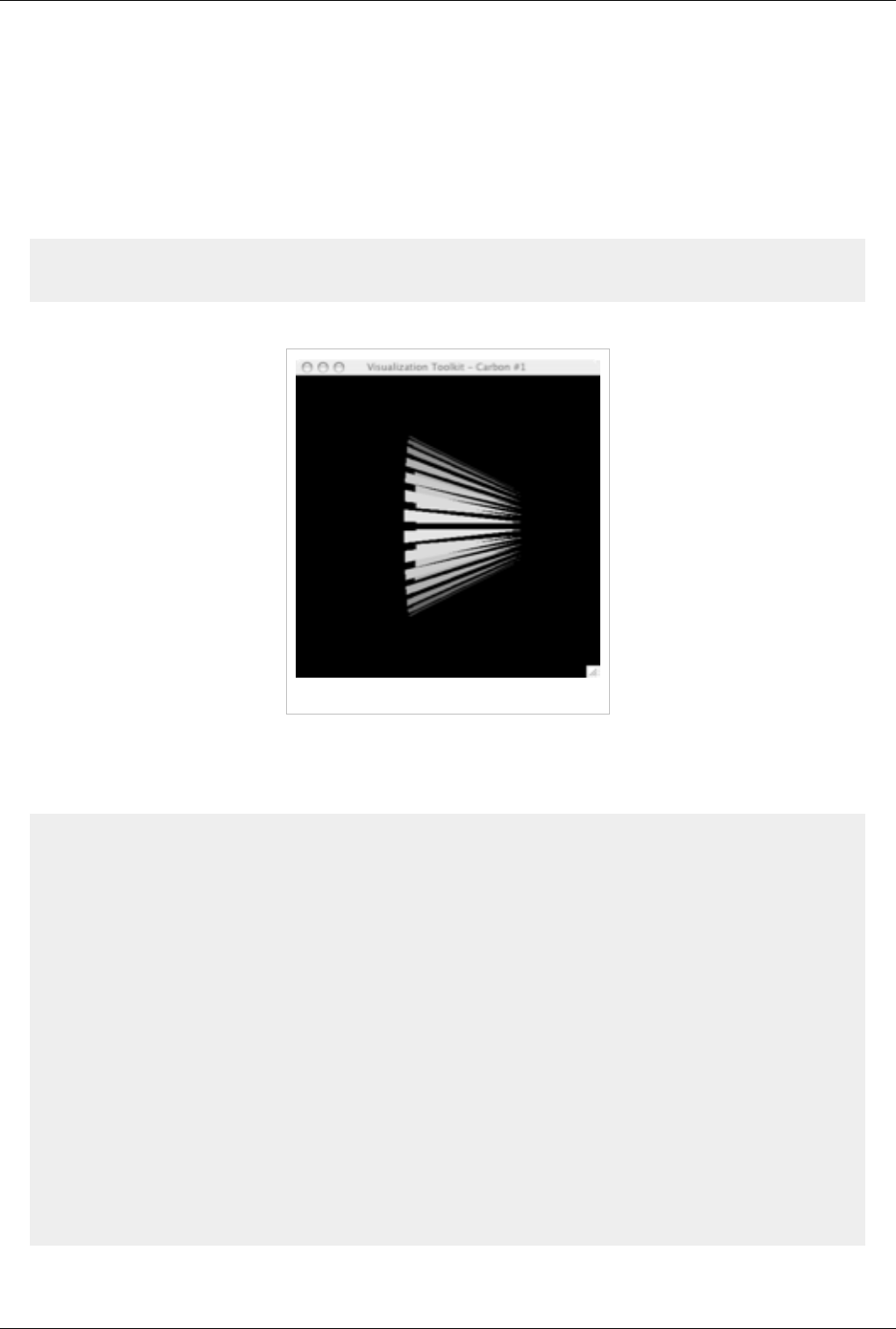

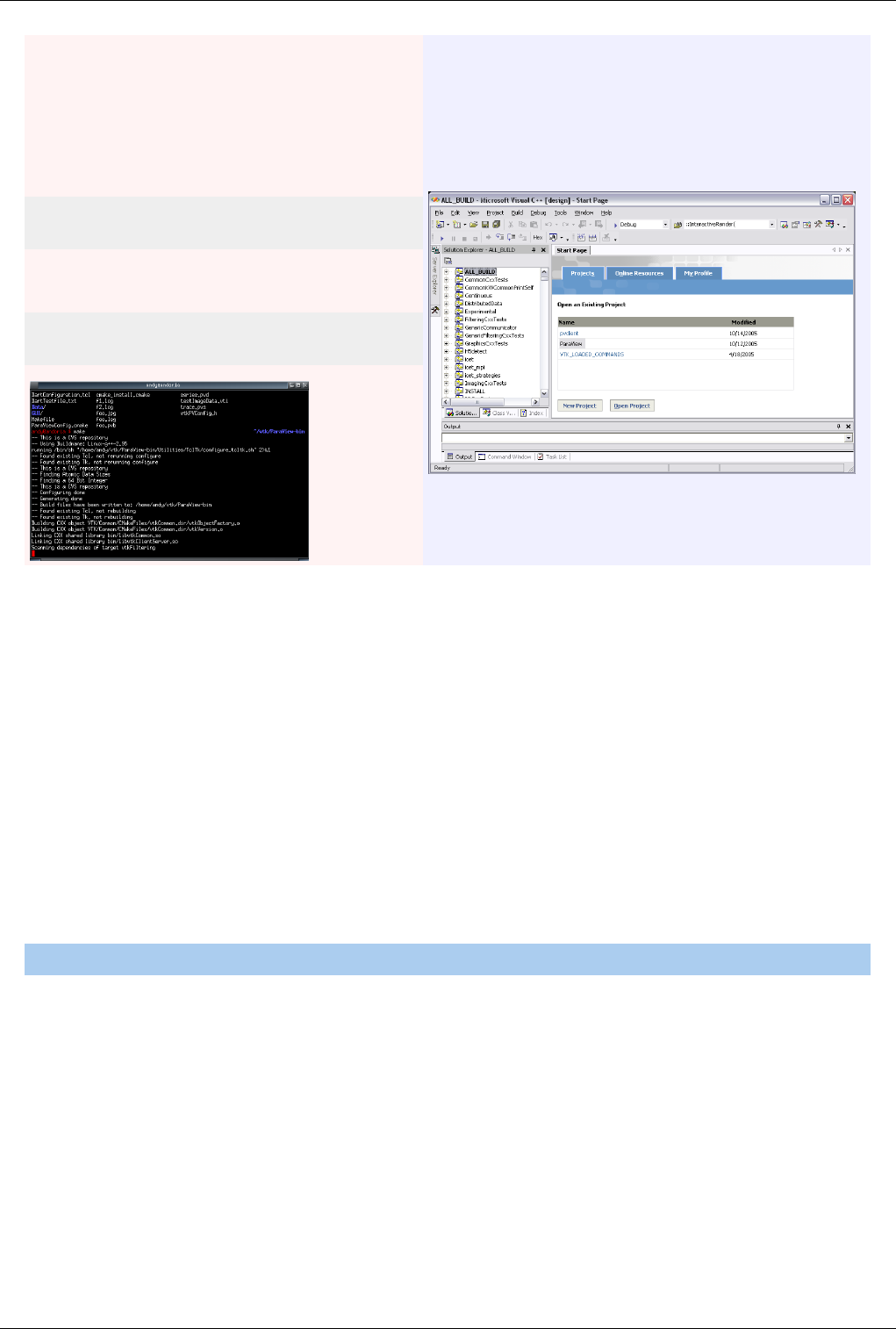

Figure 1.8 demonstrates a three-element visualization pipeline, where the output of each module in the the pipeline is

displayed in its own view. A reader takes in a vector field, defined on a curvilinear grid, which comes from a

simulation study of a wind turbine. Next a slice filter produces slices of the field on five equally spaced planes along

the X-axis. Finally, a warp filter warps those planes along the direction of the vector field, which primarily moves

the planes downwind but also shows some complexity at the location of the wind turbine.

Figure 1.8 A three-element visualization pipeline

There are more than one hundred filters available to choose from, all of which manipulate the data in different ways.

The full list of filters is available in the Appendix [5] and within the application under the Help menu. Note that many

of the filters in the menu will be grayed out and not selectable at any given time. That is because any given filter may

only operate on particular types of data. For example, the Extract Subset filter will only operate on structured data

sets so it is only enabled when the module you are building on top of produces image data, rectilinear grid data, or

structured grid data. (These input restrictions are also listed in the Appendix [7] and help menu). In this situation you

can often find a similar filter which does accept your data, or apply a filter which transforms your data into the

required format. In ParaView 3.10, you can ask ParaView to try to do the conversion for you automatically, by

clicking "Auto convert properties" in the application settings [8]. The mechanics of applying filters are described

fully in the Manipulating Data [9] chapter.

About Paraview 7

Making Mistakes

Frequently, new users of ParaView falter when they open their data, or apply a filter, and do not see it immediately

because they have not pressed the Apply button. ParaView was designed to operate on large datasets, for which any

given operation could take a long time to finish. In this situation you need the Apply button so that you have a

chance to be confident of your change before it takes effect. The highlighted Apply button is a reminder that the

parameters of one or more filters are out of sync with the data that you are viewing. Hitting the Apply button accepts

your change (or changes) whereas hitting the Reset button reverts the options back to the last time you hit Apply. If

you are working with small datasets, you may want to turn off this behavior with the Auto Accept setting under the

Application Settings [8].

The Apply behavior circumvents a great number of mistakes but not all of them. If you make some change to a filter

or to the pipeline itself and later find that you are not satisfied with the result, hit the Undo button. You can undo all

the way back to the start of your ParaView session and redo all the way forward if you like. You can also undo and

redo camera motion by using the camera undo and redo buttons located above each view window.

Persistent Sessions

If on the other hand you are satisfied with your visualization results, you may want to save your work so that you can

return to it at some future time. You can do so by using ParaView's Save State (File|Save State) and Save Trace

(Tools | Save Trace) features. In either case, ParaView produces human readable text files (XML files for State and

Python script for Trace) that can be restored or modified and restored later. This is very useful for batch processing,

which is discussed in the Python Scripting [10] chapter.

To save state means to save enough information about the ParaView session to restore it later and thus show exactly

the same result. ParaView does so by saving the current visualization pipeline and parameters of the filters within it.

If you turn on a trace recording when you first start using ParaView, saving a trace can be used for the same purpose

as saving state. However, a trace records all of your actions, including the ones that you later undo, as you do them.

It is a more exact recording of not only what you did, but how you did it. Traces are saved as python scripts, which

ParaView can play back in either batch mode or within an interactive GUI session. You can therefore use traces then

to automate repetitive tasks by recording just that action. It is also an ideal tool to learn ParaView's python scripting

API.

Client/Server Visualization

With small datasets it is usually sufficient to run ParaView as a single process on a small laptop or desktop class

machine. For large datasets, a single machine is not likely to have enough processing power and, much more

importantly, memory to process the data. In this situation you run an MPI parallel ParaView Server process on a

large machine to do computationally and memory expensive data processing and/or rendering tasks and then connect

to that server within the familiar GUI application.

When connected to a remote server the only difference you will see will be that the visualization pipeline displayed

in the Pipeline Browser will begin with the name of the server you are connected to rather than the word 'builtin'

which indicates that you are connected to a virtual server residing in the same process as the GUI. When connected

to a remote server, the File Open dialog presents the list of files that live on the remote machine's file system rather

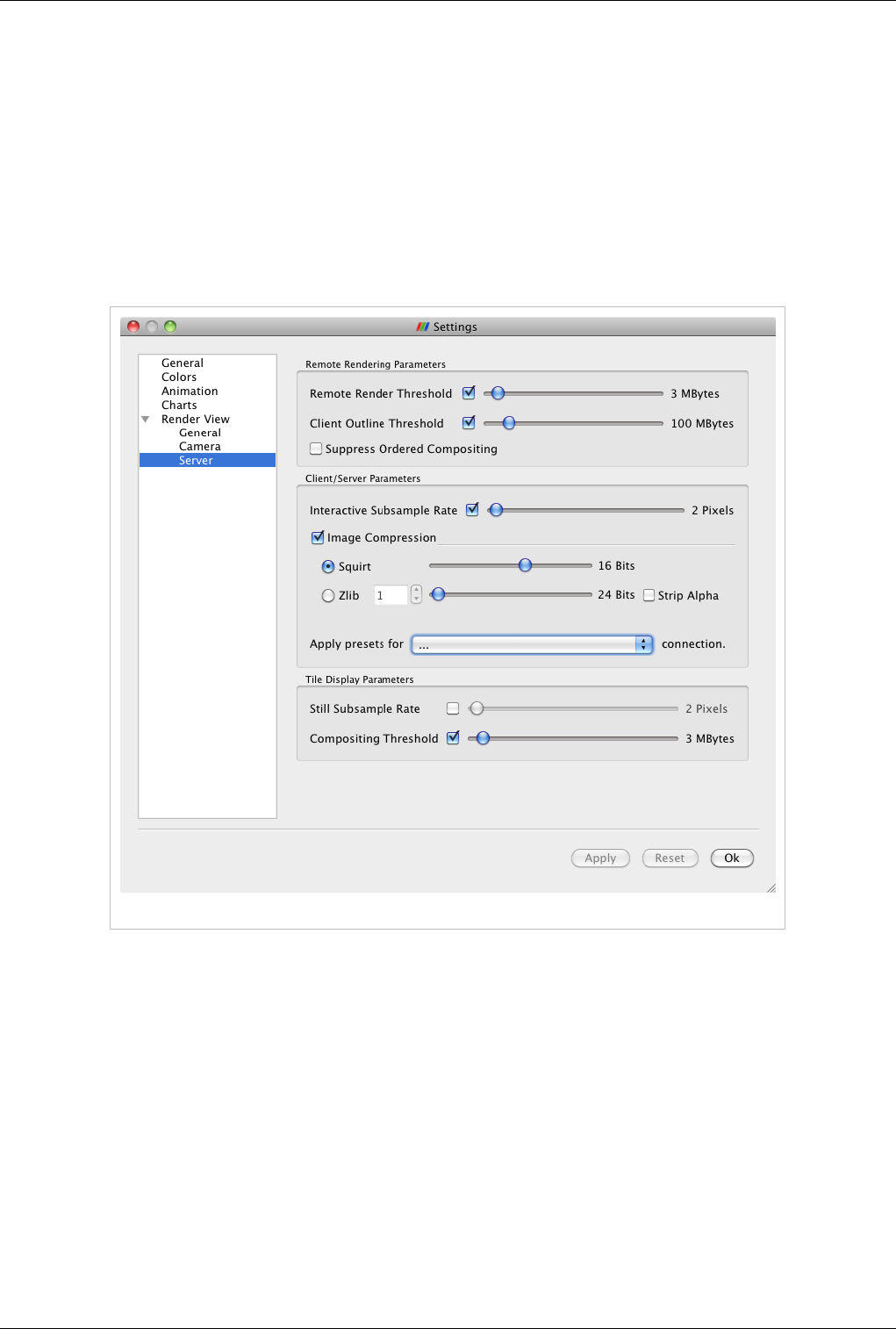

than the client's. Depending on the server's capabilities, the data size and your application settings

(Edit|Settings|Render View|Server) the data will either be rendered remotely and pixels will be sent to the client or

the geometry will be delivered and rendered locally. Large data visualization is described fully in the Client Server

Visualization [11] Chapter.

About Paraview 8

References

[1] http:/ / www. paraview. org/ Wiki/ ParaViewWeb

[2] http:/ / paraview. org/ Wiki/ ParaView/ Displaying_Data#Multiple_Views

[3] http:/ / paraview. org/ Wiki/ ParaView/ UsersGuide/ Filtering_Data#Pipeline_Basics

[4] http:/ / paraview. org/ Wiki/ ParaView/ Users_Guide/ Selection

[5] http:/ / paraview. org/ Wiki/ ParaViewUsersGuide/ List_of_readers

[6] http:/ / paraview. org/ Wiki/ Writing_ParaView_Readers

[7] http:/ / paraview. org/ Wiki/ ParaViewUsersGuide/ List_of_filters

[8] http:/ / paraview. org/ Wiki/ ParaView/ Users_Guide/ Settings

[9] http:/ / paraview. org/ Wiki/ ParaView/ UsersGuide/ Filtering_Data

[10] http:/ / paraview. org/ Wiki/ ParaView/ Python_Scripting

[11] http:/ / paraview. org/ Wiki/ Users_Guide_Client-Server_Visualization

9

Loading Data

Data Ingestion

Introduction

Loading data is a fundamental operation in using ParaView for visualization. As you would expect, the Open option

from the File menu and the Open Button from the toolbar both allow you to load data into ParaView. ParaView

understands many scientific data file formats. The most comprehensive list is given in the List of Readers [5]

appendix. Because of ParaView's modular design it is easy to integrate new readers. If the formats you need are not

listed, ask the mailing list first to see if anyone has a reader for the format or, if you want to create your own readers

for ParaView see the Plugin HowTo [1] section and the Writing Readers [6] appendix of this book.

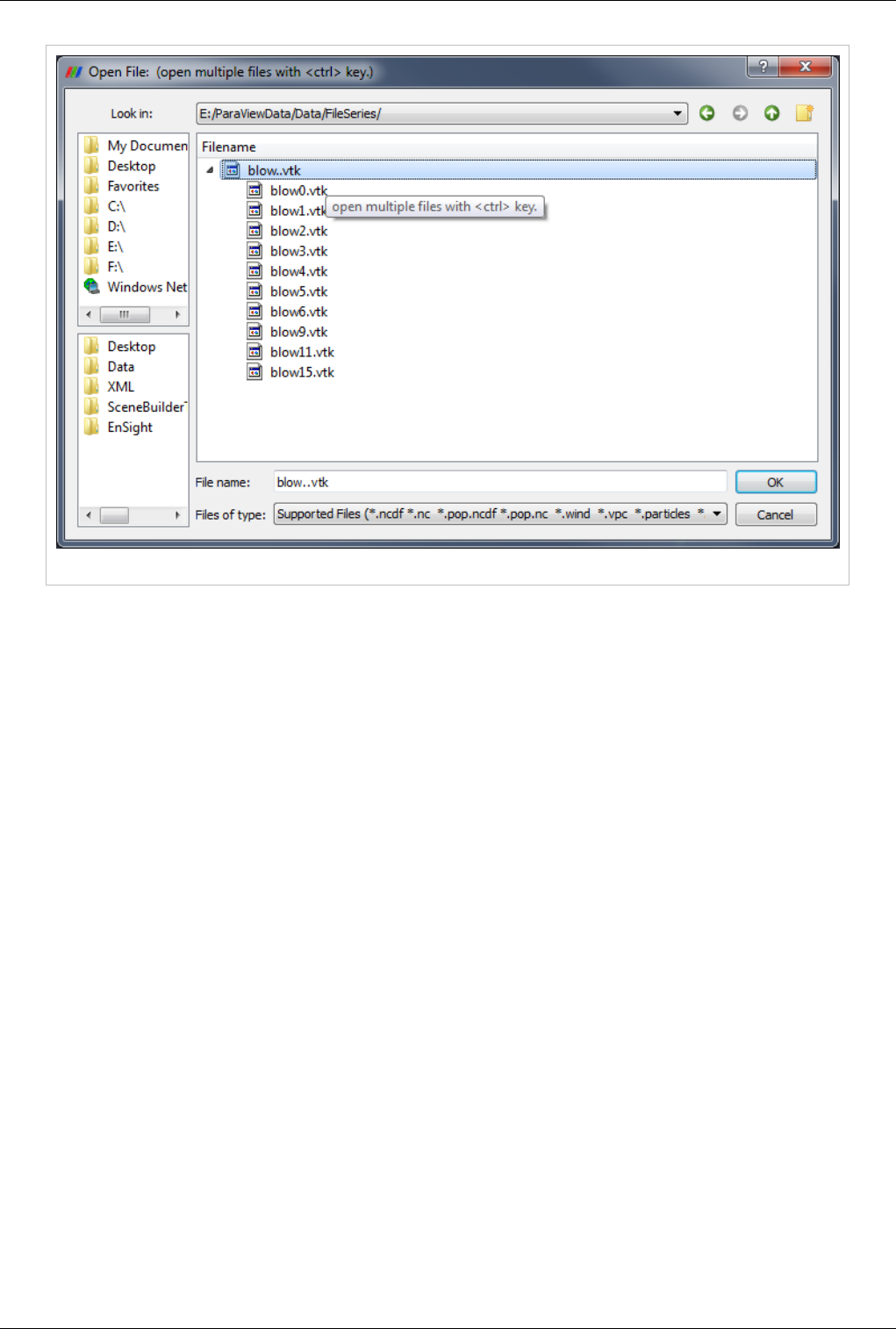

Opening File / Time Series

ParaView recognizes file series by using certain patterns in the name of files including:

• fooN.vtk

• foo_N.vtk

• foo-N.vtk

• foo.N.vtk

• Nfoo.vtk

• N.foo.vtk

• foo.vtk.N

• foo.vtk-sN

In the above file name examples, N is an integer (with any number of leading zeros). To load a file series, first make

sure that the file names match one of the patterns described above. Next, navigate to the directory where the file

series is. The file browser should look like Figure 2.1:

Data Ingestion 10

Figure 2.1 Sample browser when opening files

You can expand the file series by clicking on the triangle, as shown in the above diagram. Simply select the group

(in the picture named blow..vtk) and click OK. The reader will store all the filenames and treat each file as a time

step. You can now animate, use the annotate time filter, or do anything you can do with readers that natively support

time. If you want to load a single step of a file series just expand the triangle and select the file you are interested in.

Data Ingestion 11

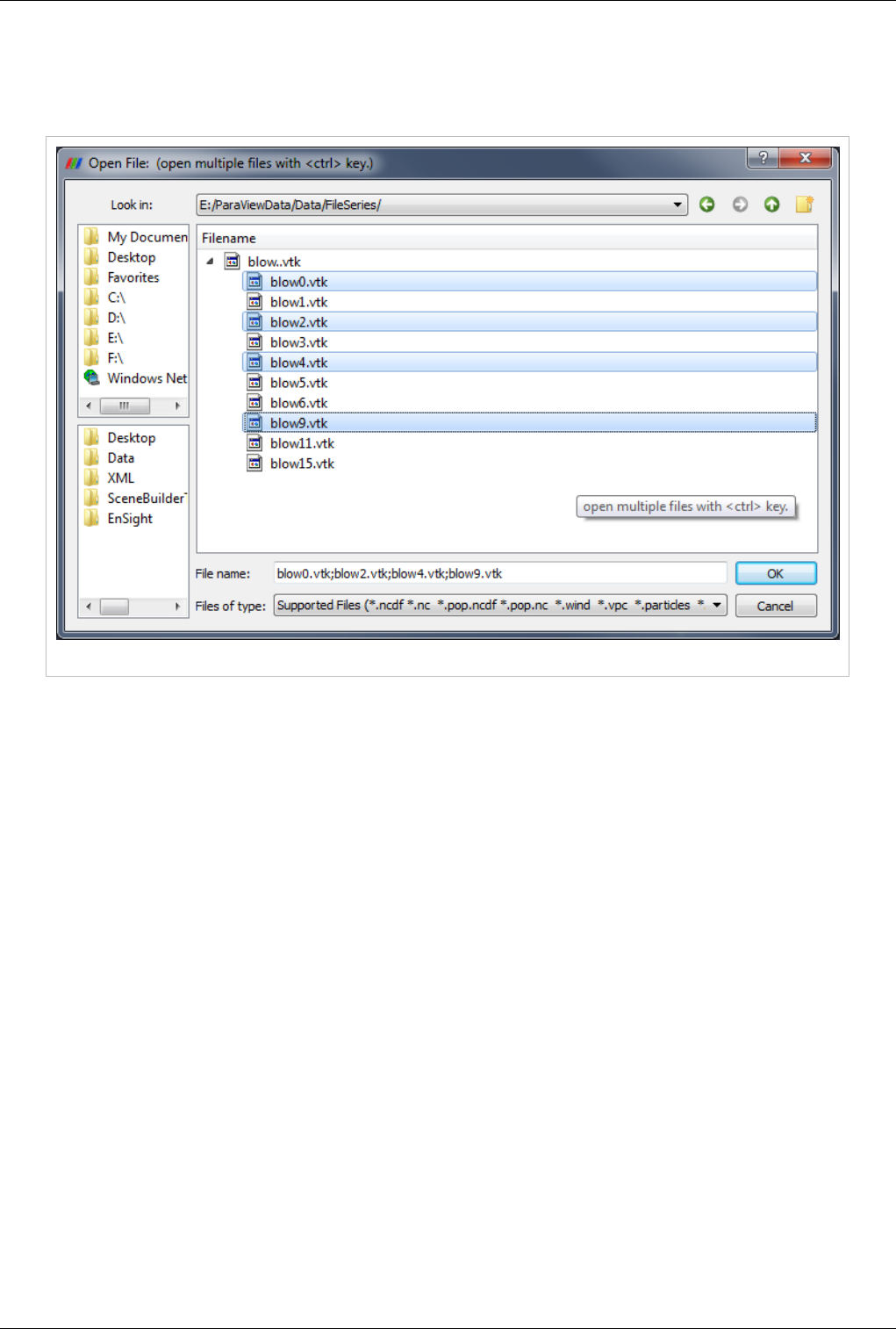

Opening Multiple Files

ParaView supports loading multiple files as long as they exist in the same directory. Just hold the Ctrl key down

while selecting each file (Figure 2.2), or hold shift to select all files in a range.

Figure 2.2 Opening multiple files

State Files

Another option is to load a previously saved state file (File|Load State). This will return ParaView to its state at the

time the file was saved by loading data files, applying filters.

Advanced Data Loading

If you commonly load the same data into ParaView each time, you can streamline the process by launching

ParaView with the data command-line argument (--data=data_file).

Properties Panel

Note that opening a file is a two step process, and so you do not see any data after opening a data file. Instead, you

see that the Properties Panel is populated with several options about how you may want to read the data.

Data Ingestion 12

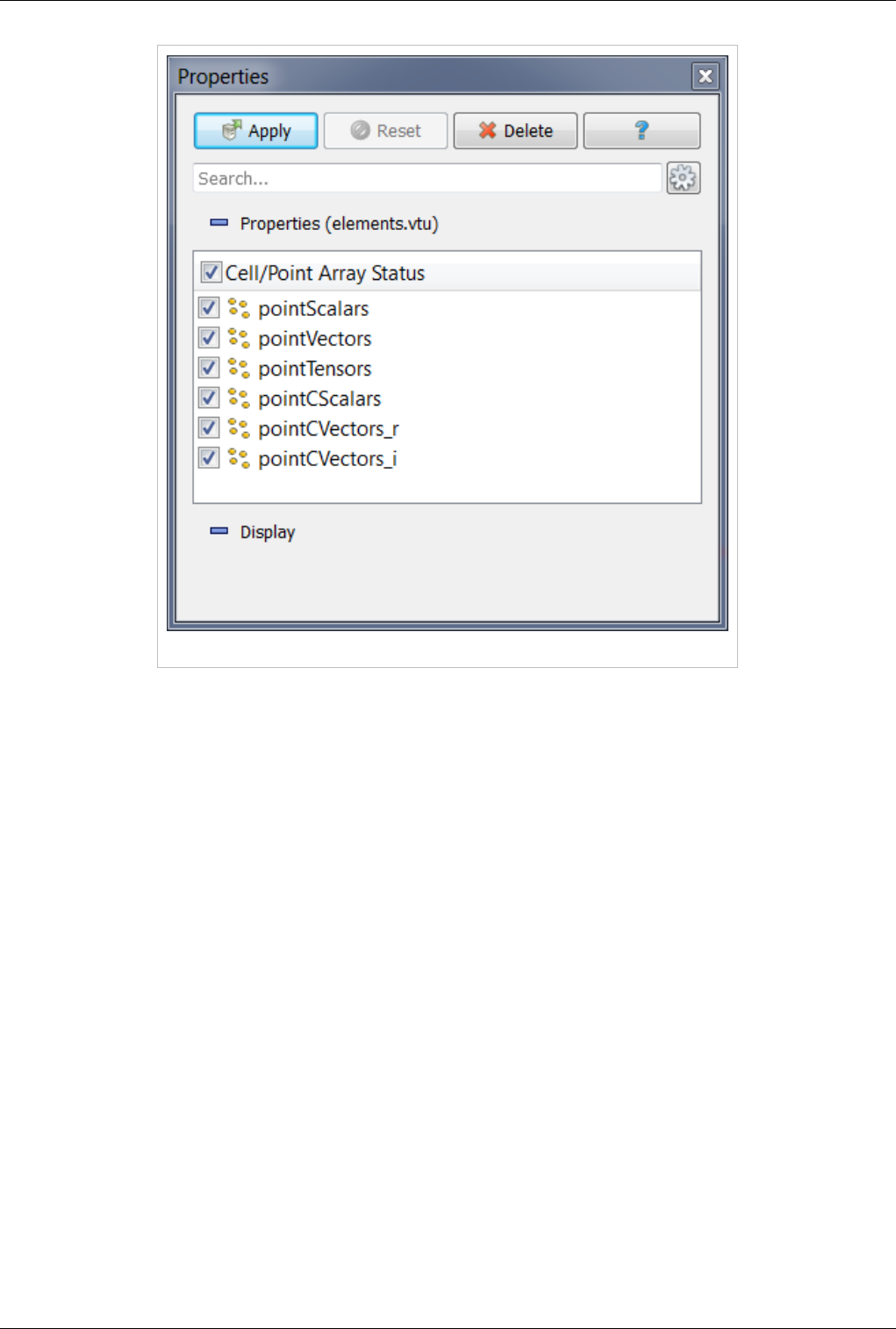

Figure 2.3 Using the Properties panel

Once you have enabled all the options on the data that you are interested in click the Apply button to finish loading

the data. For a more detailed explanation of the object inspector read the Properties Section .

References

[1] http:/ / www. paraview. org/ Wiki/ ParaView/ Plugin_HowTo#Adding_a_Reader

13

Understanding Data

VTK Data Model

Introduction

To use ParaView effectively, you need to understand the ParaView data model. This chapter briefly introduces the

VTK data model used by ParaView. For more details, refer to one of the VTK books.

The most fundamental data structure in VTK is a data object. Data objects can either be scientific datasets such

rectilinear grids or finite elements meshes (see below) or more abstract data structures such as graphs or trees. These

datasets are formed of smaller building blocks: mesh (topology and geometry) and attributes.

Mesh

Even though the actual data structure used to store the mesh in memory depends on the type of the dataset, some

abstractions are common to all types. In general, a mesh consists of vertices (points) and cells (elements, zones).

Cells are used to discretize a region and can have various types such a tetrahedra, hexahedra etc. Each cell contains a

set of vertices. The mapping from cells to vertices is called the connectivity. Note that even though it is possible to

define data elements such as faces and edges, VTK does not represent these explicitly. Rather, they are implied by a

cell's type and its connectivity. One exception to this rule is the arbitrary polyhedron which explicitly stores its faces.

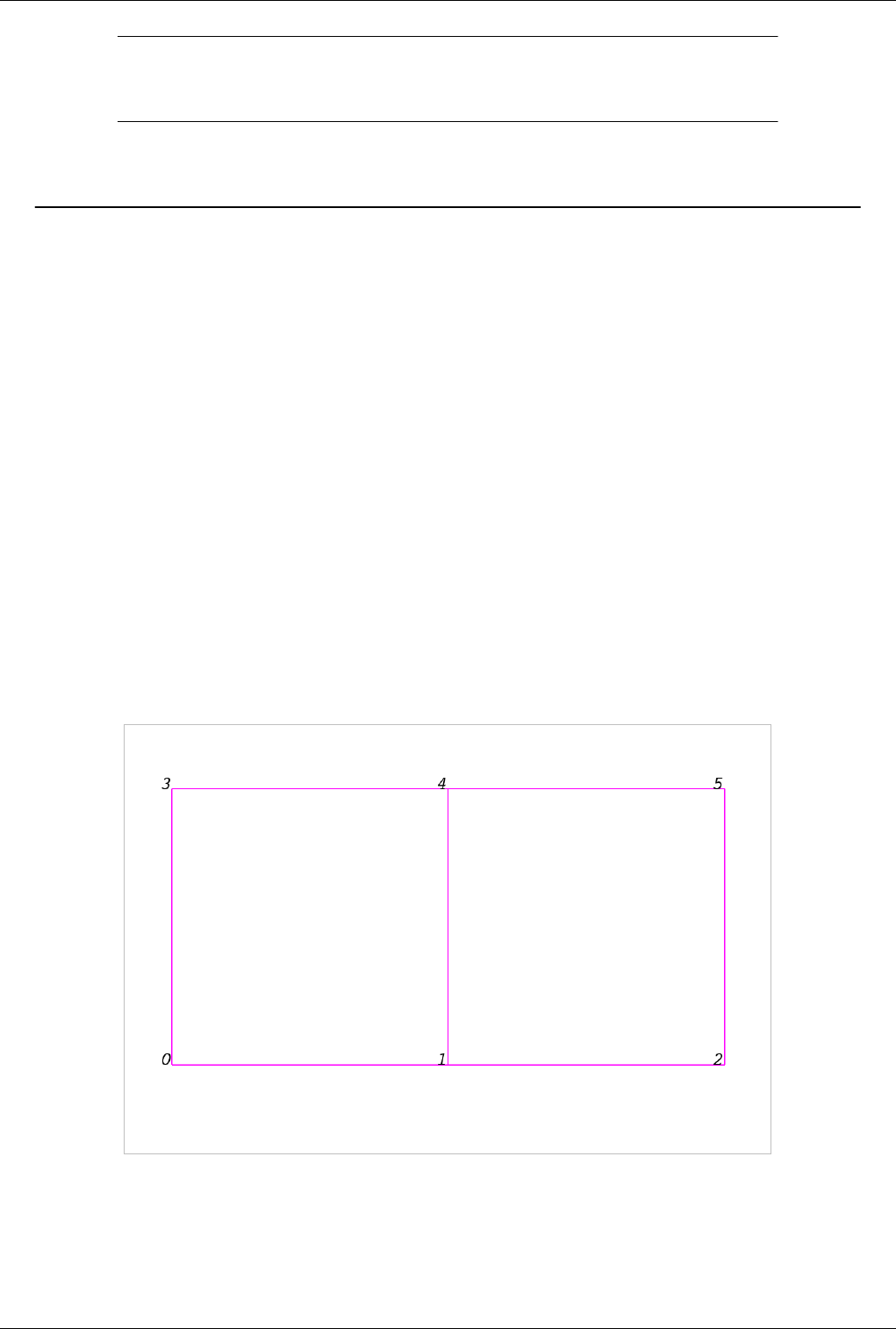

Figure 3.1 is an example mesh that consists of 2 cells. The first cell is defined by vertices (0, 1, 3, 4) and the second

cell is defined by vertices (1, 2, 4, 5). These cells are neighbors because they share the edge defined by the points (1,

4).

Figure 3.1 Example of a mesh

A mesh is fully defined by its topology and the spatial coordinates of its vertices. In VTK, the point coordinates may

be implicit or explicitly defined by a data array of dimensions (number_of_points x 3).

VTK Data Model 14

Attributes (fields, arrays)

An attribute (or a data array or field) defines the discrete values of a field over the mesh. Examples of attributes

include pressure, temperature, velocity and stress tensor. Note that VTK does not specifically define different types

of attributes. All attributes are stored as data arrays which can have an arbitrary number of components. ParaView

makes some assumptions in regards to the number of components. For example, a 3-component array is assumed to

be an array of vectors. Attributes can be associated with points or cells. It is also possible to have attributes that are

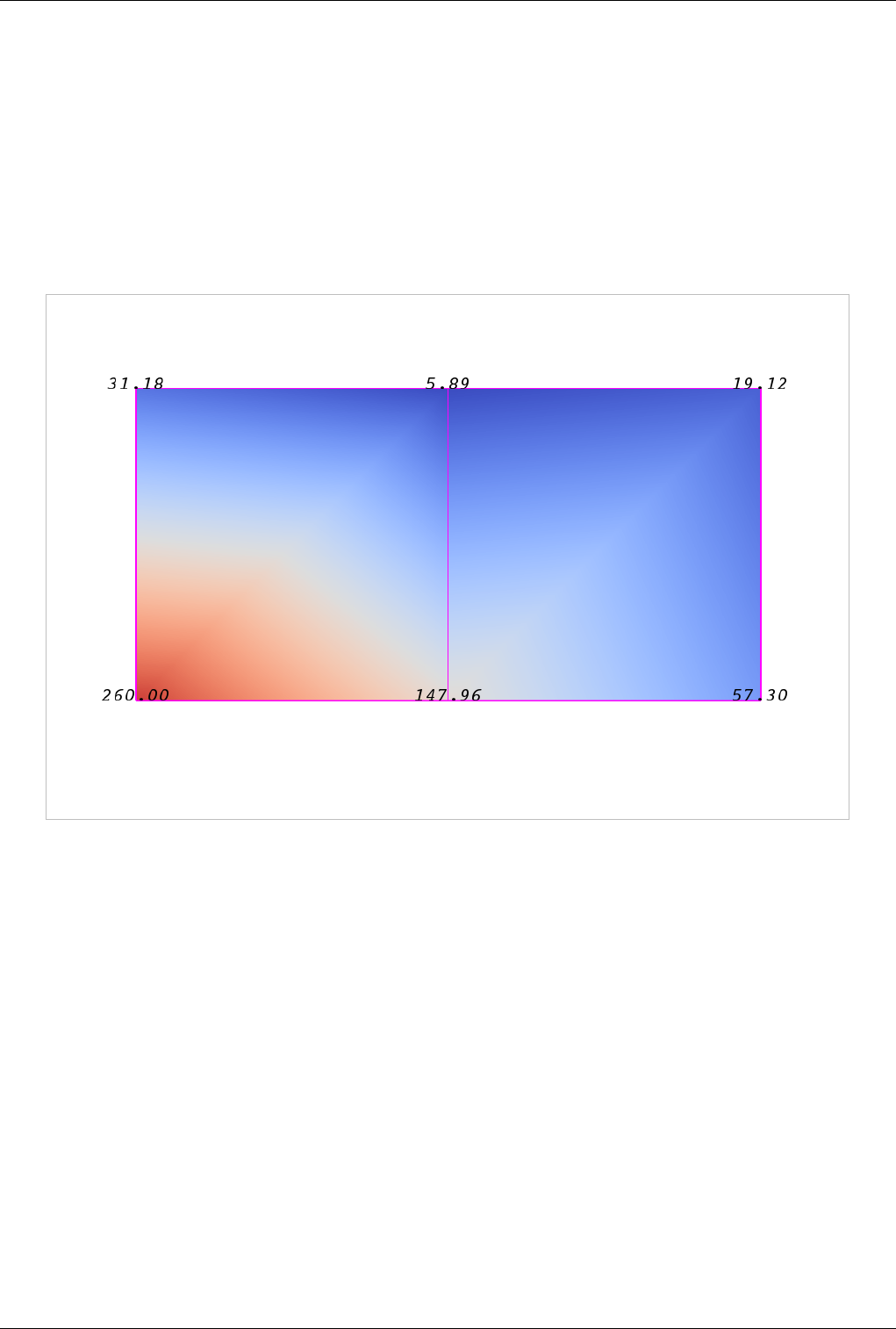

not associated with either. Figure 3.2 demonstrates the use of a point-centered attribute. Note that the attribute is only

defined on the vertices. Interpolation is used to obtain the values everywhere else. The interpolation functions used

depend on the cell type. See VTK documentation for details.

Figure 3.2 Point-centered attribute in a data array or field

Figure 3.3 demonstrates the use of a cell-centered attribute. Note that cell-centered attributes are assumed to be

constant over each cell. Due to this property, many filters in VTK cannot be directly applied to cell-centered

attributes. It is normally required to apply a Cell Data to Point Data filter. In ParaView, this filter is applied

automatically when necessary.

VTK Data Model 16

A uniform rectilinear grid, or image data, defines its topology and point coordinates implicitly. To fully define the

mesh for an image data, VTK uses the following:

• Extents - these define the minimum and maximum indices in each direction. For example, an image data of

extents (0, 9), (0, 19), (0, 29) has 10 points in the x-direction, 20 points in the y-direction and 30 points in the

z-direction. The total number of points is 10*20*30.

• Origin - this is the position of a point defined with indices (0, 0, 0)

• Spacing - this is the distance between each point. Spacing for each direction can defined independently

The coordinate of each point is defined as follows: coordinate = origin + index*spacing where coordinate, origin,

index and spacing are vectors of length 3.

Note that the generic VTK interface for all datasets uses a flat index. The (i,j,k) index can be converted to this flat

index as follows: idx_flat = k*(npts_x*npts_y) + j*nptr_x + i.

A uniform rectilinear grid consists of cells of the same type. This type is determined by the dimensionality of the

dataset (based on the extents) and can either be vertex (0D), line (1D), pixel (2D) or voxel (3D).

Due to its regular nature, image data requires less storage than other datasets. Furthermore, many algorithms in VTK

have been optimized to take advantage of this property and are more efficient for image data.

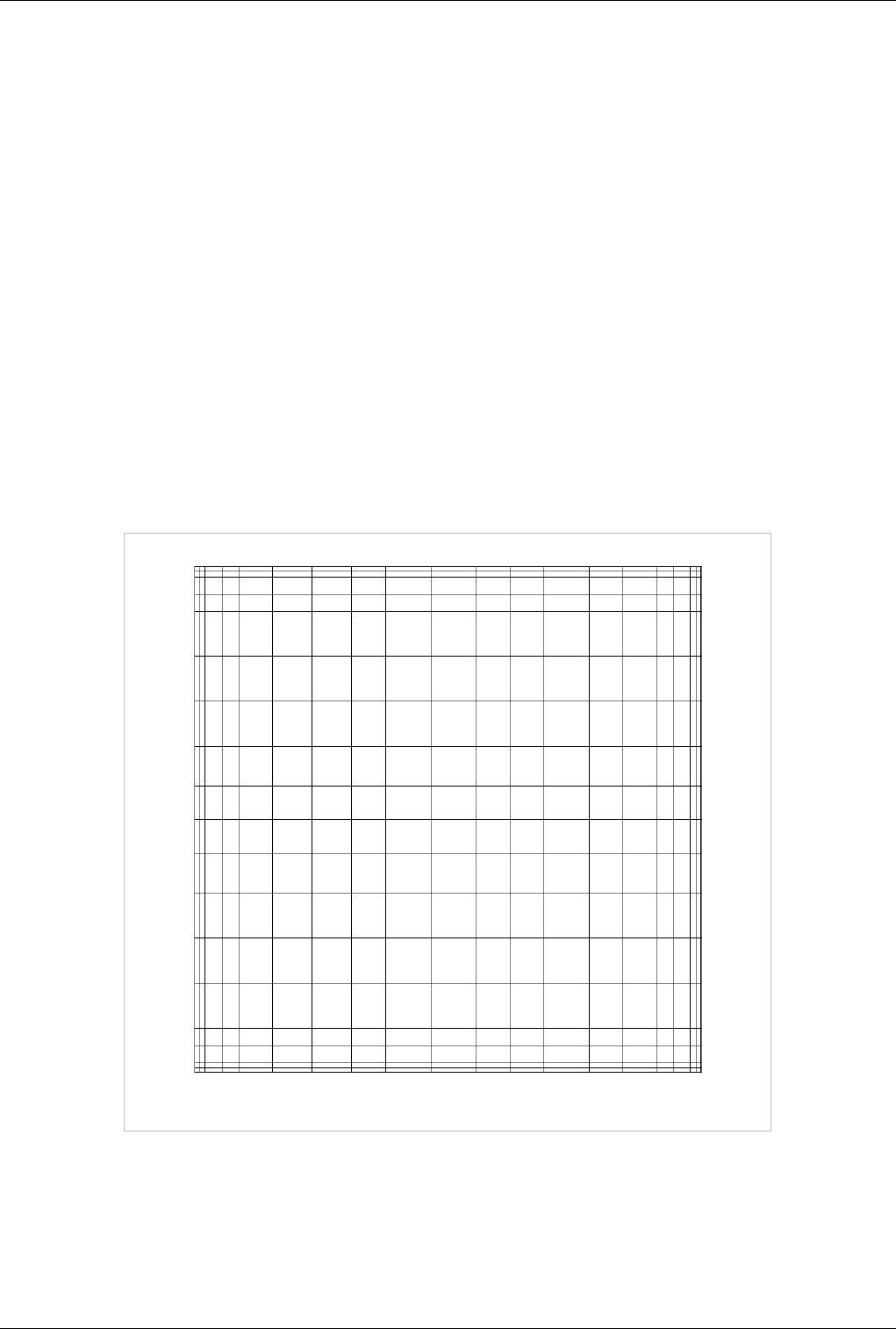

Rectilinear Grid

Figure 3.5 Rectilinear grid

A rectilinear grid such as Figure 3.5 defines its topology implicitly and point coordinates semi-implicitly. To fully

define the mesh for a rectilinear grid, VTK uses the following:

• Extents - these define the minimum and maximum indices in each direction. For example, a rectilinear grid of

extents (0, 9), (0, 19), (0, 29) has 10 points in the x-direction, 20 points in the y-direction and 30 points in the

z-direction. The total number of points is 10*20*30.

VTK Data Model 17

• Three arrays defining coordinates in the x-, y- and z-directions. These arrays are of length npts_x, npts_y and

npts_z. This is a significant savings in memory as total memory used by these arrays is npts_x+npts_y+npts_z

rather than npts_x*npts_y*npts_z.

The coordinate of each point is defined as follows: coordinate = (coordinate_array_x(i), coordinate_array_y(j),

coordinate_array_z(k))".

Note that the generic VTK interface for all datasets uses a flat index. The (i,j,k) index can be converted to this flat

index as follows: idx_flat = k*(npts_x*npts_y) + j*nptr_x + i.

A rectilinear grid consists of cells of the same type. This type is determined by the dimensionality of the dataset

(based on the extents) and can either be vertex (0D), line (1D), pixel (2D) or voxel (3D).

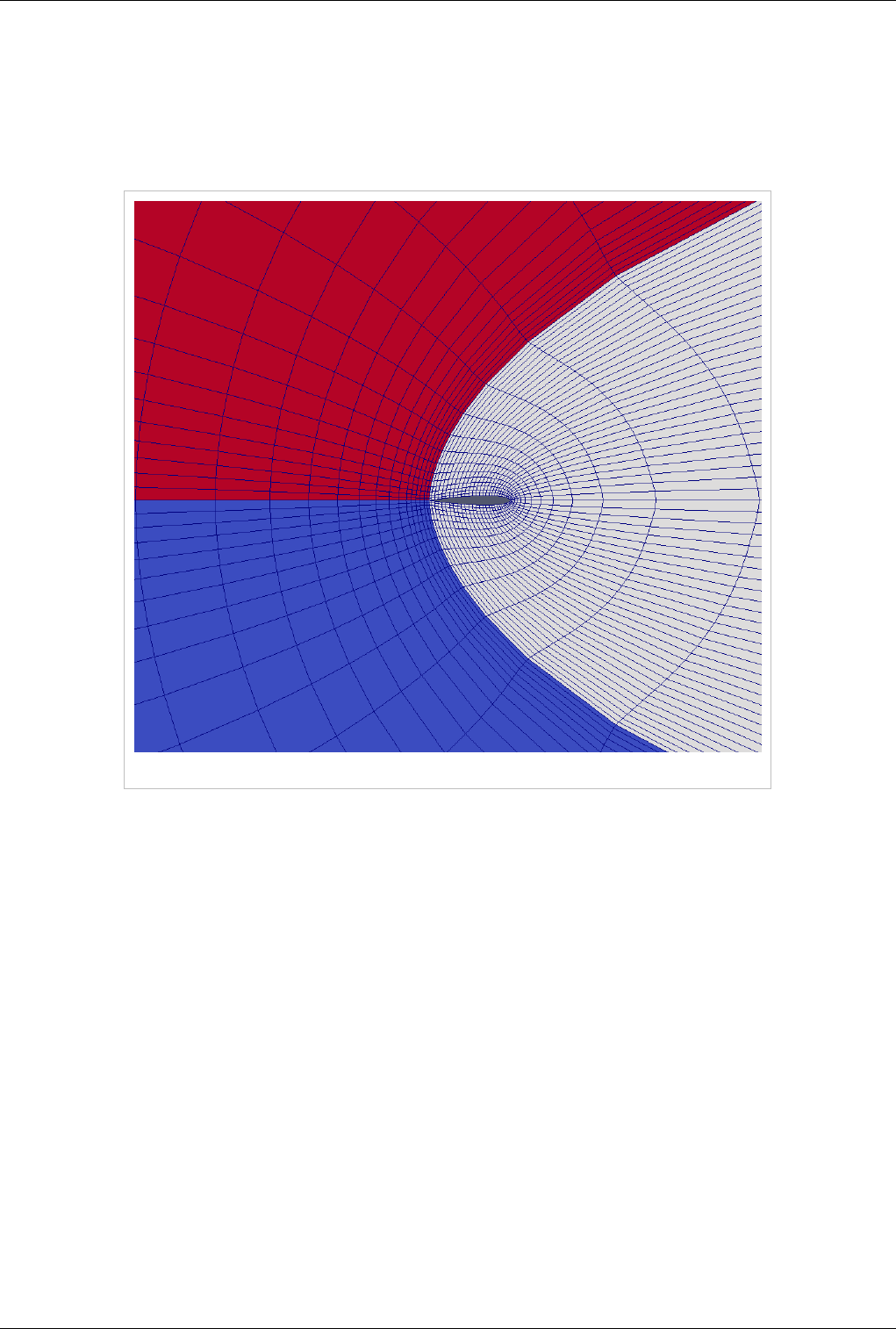

Curvilinear Grid (Structured Grid)

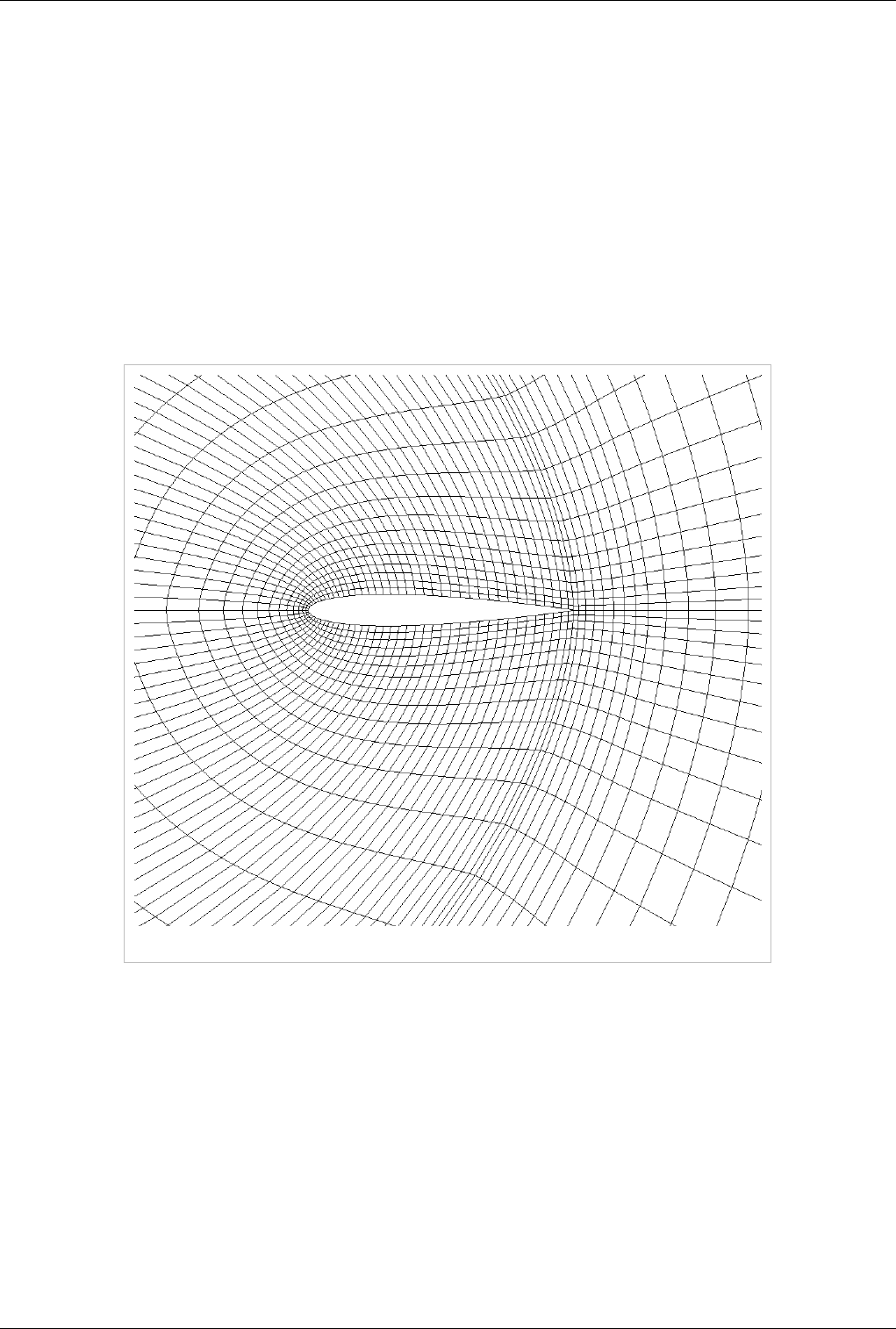

Figure 3.6 Curvilinear or structured grid

A curvilinear grid, such as Figure 3.6, defines its topology implicitly and point coordinates explicitly. To fully define

the mesh for a curvilinear grid, VTK uses the following:

• Extents - these define the minimum and maximum indices in each direction. For example, a curvilinear grid of

extents (0, 9), (0, 19), (0, 29) has 10*20*30 points regularly defined over a curvilinear mesh.

• An array of point coordinates. This arrays stores the position of each vertex explicitly.

The coordinate of each point is defined as follows: coordinate = coordinate_array(idx_flat)". The (i,j,k) index can

be converted to this flat index as follows: idx_flat = k*(npts_x*npts_y) + j*nptr_x + i.

A curvilinear grid consists of cells of the same type. This type is determined by the dimensionality of the dataset

(based on the extents) and can either be vertex (0D), line (1D), quad (2D) or hexahedron (3D).

VTK Data Model 18

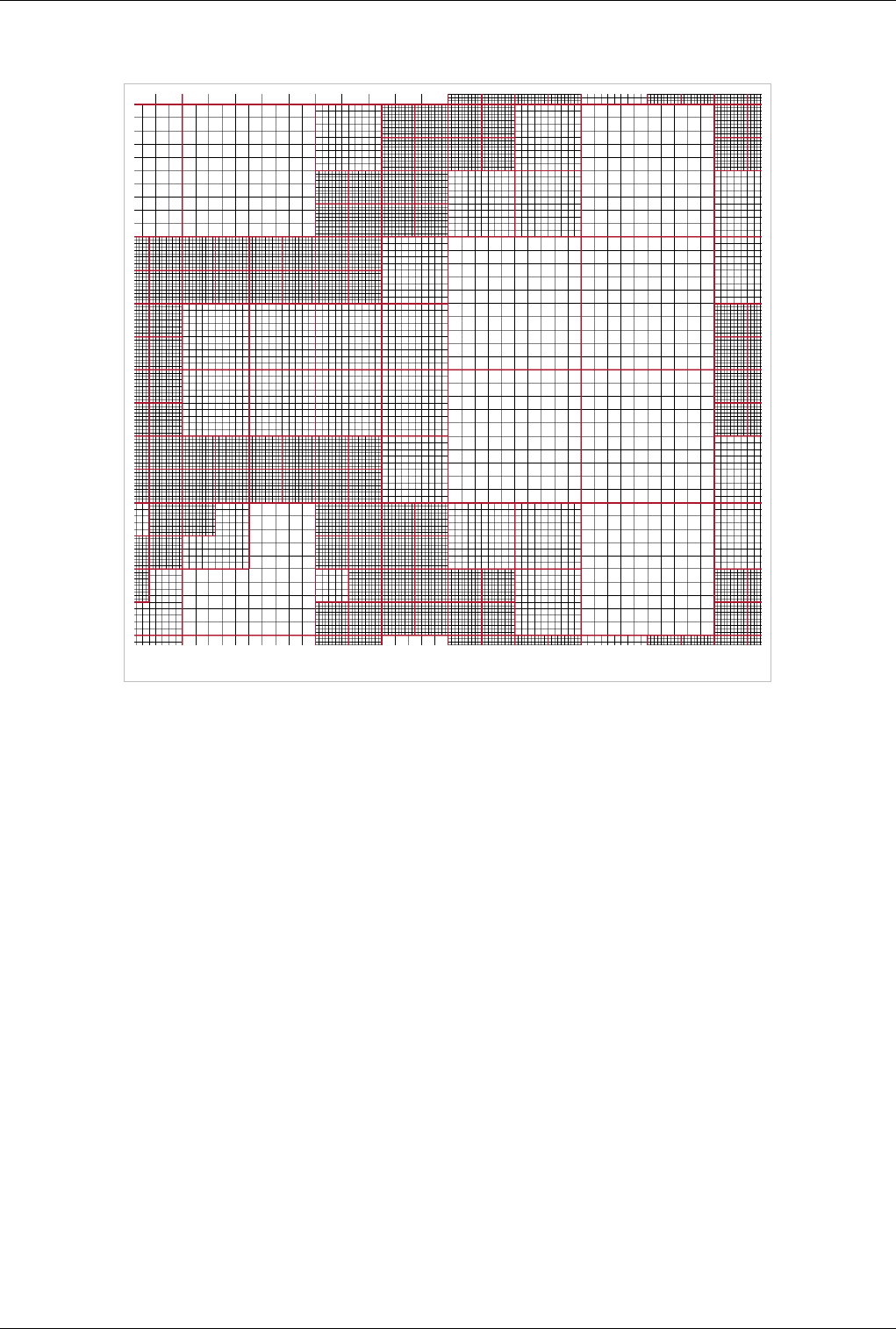

AMR Dataset

Figure 3.7 AMR dataset

VTK natively support Berger-Oliger type AMR (Adaptive Mesh Refinement) datasets, as shown in Figure 3.7. An

AMR dataset is essentially a collection of uniform rectilinear grids grouped under increasing refinement ratios

(decreasing spacing). VTK's AMR dataset does not force any constraint on whether and how these grids should

overlap. However, it provides support for masking (blanking) sub-regions of the rectilinear grids using an array of

bytes. This allows VTK to process overlapping grids with minimal artifacts. VTK can automatically generate the

masking arrays for Berger-Oliger compliant meshes.

VTK Data Model 19

Unstructured Grid

Figure 3.8 Unstructured grid

An unstructured grid such as Figure 3.8 is the most general primitive dataset type. It stores topology and point

coordinates explicitly. Even though VTK uses a memory-efficient data structure to store the topology, an

unstructured grid uses significantly more memory to represent its mesh. Therefore, use an unstructured grid only

when you cannot represent your dataset as one of the above datasets. VTK supports a large number of cell types, all

of which can exist (heterogeneously) within one unstructured grid. The full list of all cell types supported by VTK

can be found in the file vtkCellType.h in the VTK source code. Here is the list as of when this document was

written:

VTK_EMPTY_CELL VTK_VERTEX

VTK_POLY_VERTEX VTK_LINE

VTK_POLY_LINE VTK_TRIANGLE

VTK_TRIANGLE_STRIP VTK_POLYGON

VTK_PIXEL VTK_QUAD

VTK_TETRA VTK_VOXEL

VTK_HEXAHEDRON VTK_WEDGE

VTK_PYRAMID VTK_PENTAGONAL_PRISM

VTK_HEXAGONAL_PRISM VTK_QUADRATIC_EDGE

VTK_QUADRATIC_TRIANGLE VTK_QUADRATIC_QUAD

VTK_QUADRATIC_TETRA VTK_QUADRATIC_HEXAHEDRON

VTK_QUADRATIC_WEDGE VTK_QUADRATIC_PYRAMID

VTK Data Model 20

VTK_BIQUADRATIC_QUAD VTK_TRIQUADRATIC_HEXAHEDRON

VTK_QUADRATIC_LINEAR_QUAD VTK_QUADRATIC_LINEAR_WEDGE

VTK_BIQUADRATIC_QUADRATIC_WEDGE VTK_BIQUADRATIC_QUADRATIC_HEXAHEDRON

VTK_BIQUADRATIC_TRIANGLE VTK_CUBIC_LINE

VTK_CONVEX_POINT_SET VTK_POLYHEDRON

VTK_PARAMETRIC_CURVE VTK_PARAMETRIC_SURFACE

VTK_PARAMETRIC_TRI_SURFACE VTK_PARAMETRIC_QUAD_SURFACE

VTK_PARAMETRIC_TETRA_REGION VTK_PARAMETRIC_HEX_REGION

Many of these cell types are straightforward. For details, see VTK documentation.

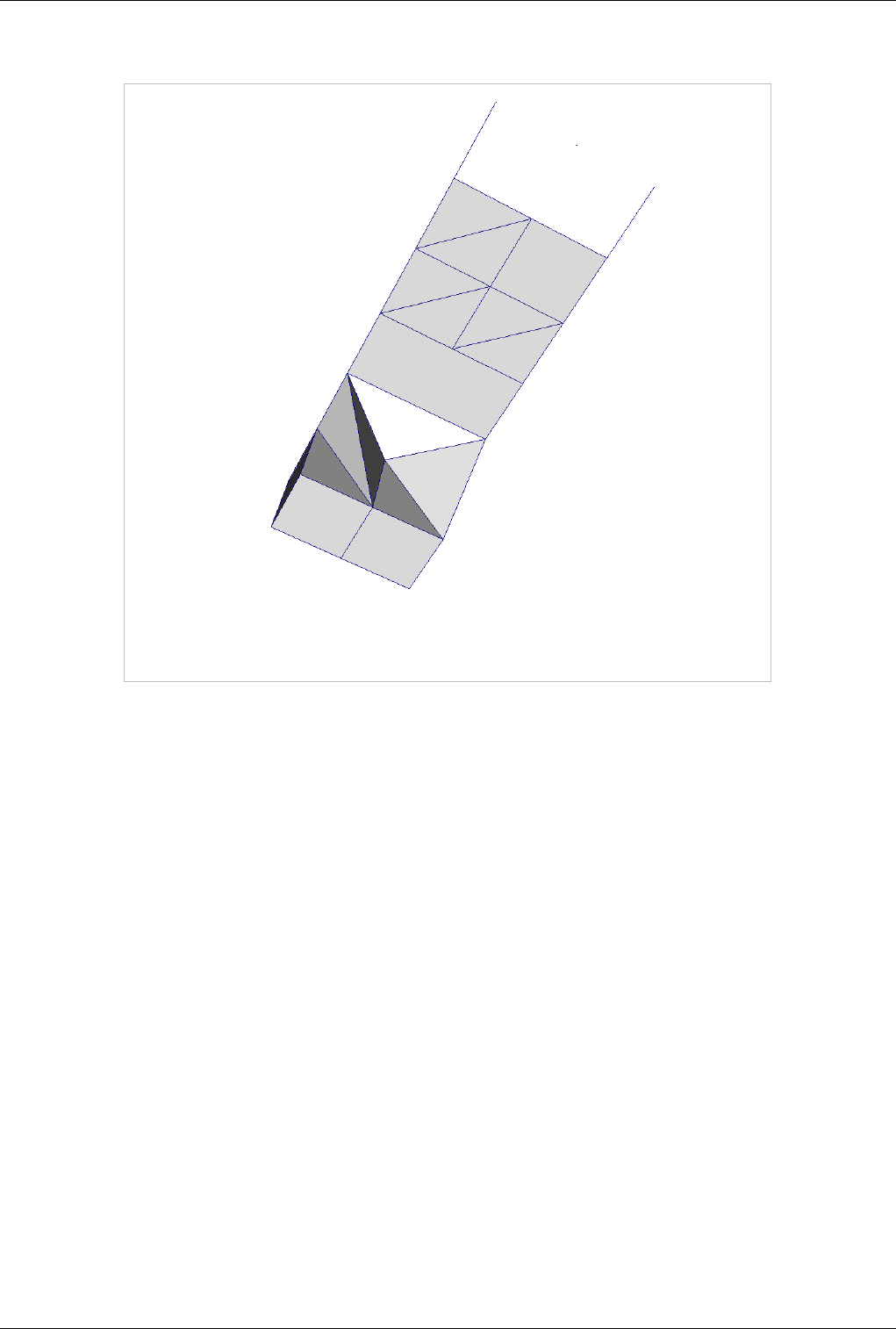

Polygonal Grid (Polydata)

Figure 3.9 Polygonal grid

A polydata such as Figure 3.9 is a specialized version of an unstructured grid designed for efficient rendering. It

consists of 0D cells (vertices and polyvertices), 1D cells (lines and polylines) and 2D cells (polygons and triangle

strips). Certain filters that generate only these cell types will generate a polydata. Examples include the Contour and

Slice filters. An unstructured grid, as long as it has only 2D cells supported by polydata, can be converted to a

polydata using the Extract Surface filter. A polydata can be converted to an unstructured grid using Clean to Grid.

VTK Data Model 21

Table

Table 3.1

A table like Table 3.1 is a tabular dataset that consists of rows and columns. All chart views have been designed to

work with tables. Therefore, all filters that can be shown within the chart views generate tables. Also, tables can be

directly loaded using various file formats such as the comma separated values format. Tables can be converted to

other datasets as long as they are of the right format. Filters that convert tables include Table to Points and Table to

Structured Grid.

Multiblock Dataset

Figure 3.10 Multiblock dataset

You can think of a multi-block dataset as a tree of datasets where the leaf nodes are "simple" datasets. All of the data

types described above, except AMR, are "simple" datasets. Multi-block datasets are used to group together datasets

that are related. The relation between these datasets is not necessarily defined by ParaView. A multi-block dataset

can represent an assembly of parts or a collection of meshes of different types from a coupled simulation.

VTK Data Model 22

Multi-block datasets can be loaded or created within ParaView using the Group filter. Note that the leaf nodes of a

multi-block dataset do not all have to have the same attributes. If you apply a filter that requires an attribute, it will

be applied only to blocks that have that attribute.

Multipiece Dataset

Figure 3.11 Multipiece dataset

Multi-piece datasets such as Figure 3.11 are similar to multi-block datasets in that they group together simple

datasets with one key difference. Multi-piece datasets group together datasets that are part of a whole mesh - datasets

of the same type and with same attributes. This data structure is used collect datasets produced by a parallel

simulation without having to append the meshes together. Note that there is no way to create a multi-piece dataset

within ParaView, but only by using certain readers. Furthermore, multi-piece datasets act, for the most part, as

simple datasets. For example, it is not possible to extract individual pieces or obtain information about them.

Information Panel 23

Information Panel

Introduction

Clicking on the Information button on the Object Inspector will take you to the Information Panel. The purpose of

this panel is to provide you with information about the output of the currently selected source, reader or filter. The

information on this panel is presented in several sections. We start by describing the sections that are applicable to

all dataset types then we describe data specific sections.

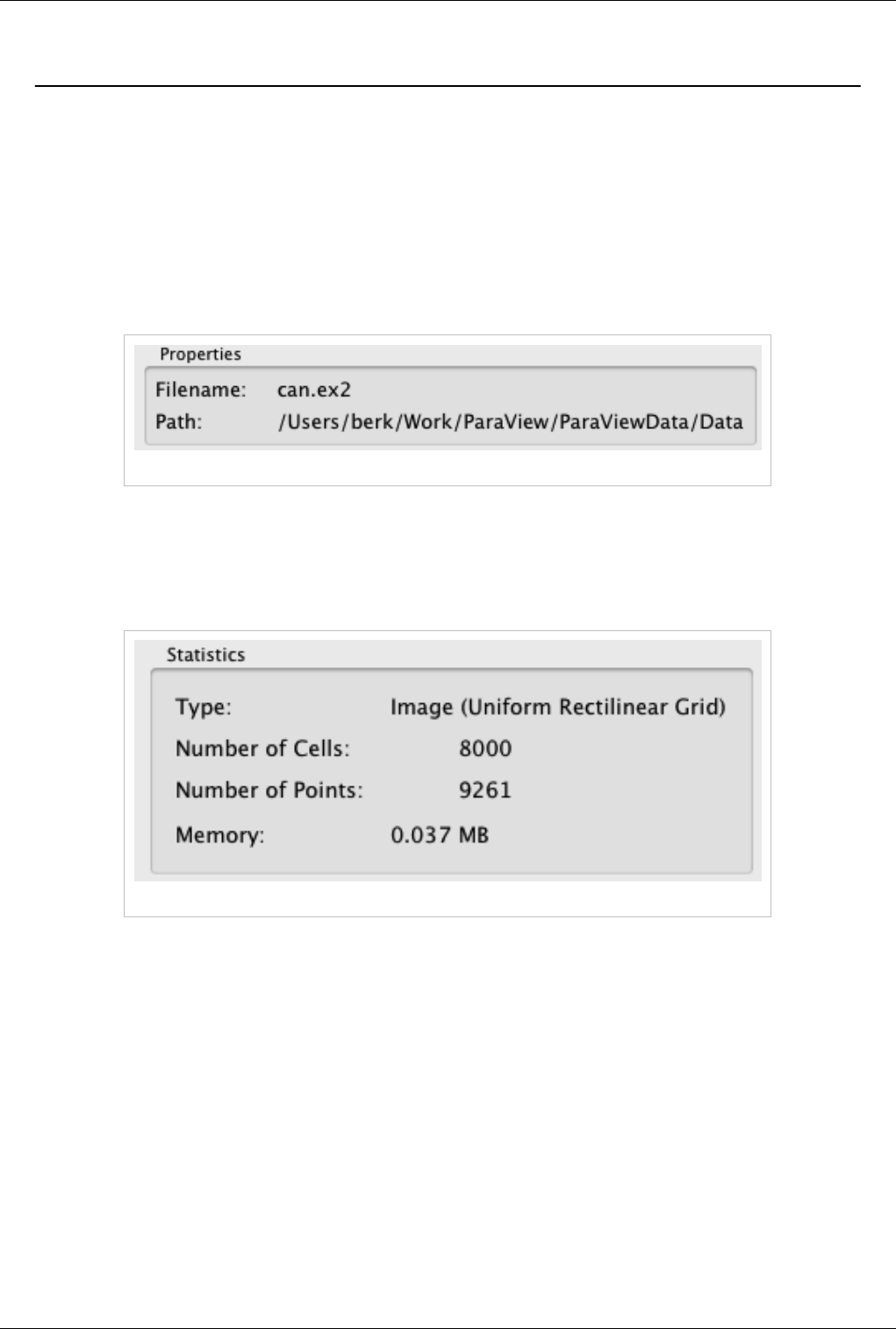

File Properties

Figure 3.12 File properties

If the current pipeline object is a reader, the top section will display the name of the file and its full path, as in Figure

3.12.

Data Statistics

Figure 3.13 Data statistics

The Statistics section displays high-level information about the dataset including the type, number of points and cells

and the total memory used. Note that the memory is for the dataset only and does not include memory used by the

representation (for example, the polygonal mesh that may represent the surface). All of this information is for the

current time step.

Information Panel 24

Array Information

Figure 3.14 Array information

The data shown in Figure 3.14 shows the association (point, cell or global), name, type and range of each array in the

dataset. In the example, the top three attributes are point arrays, the middle three are cell arrays and the bottom three

are global (field) arrays. Note that for vectors, the range of each component is shown separately. In case, the range

information does not fit the frame, the tooltip will display all of the values.

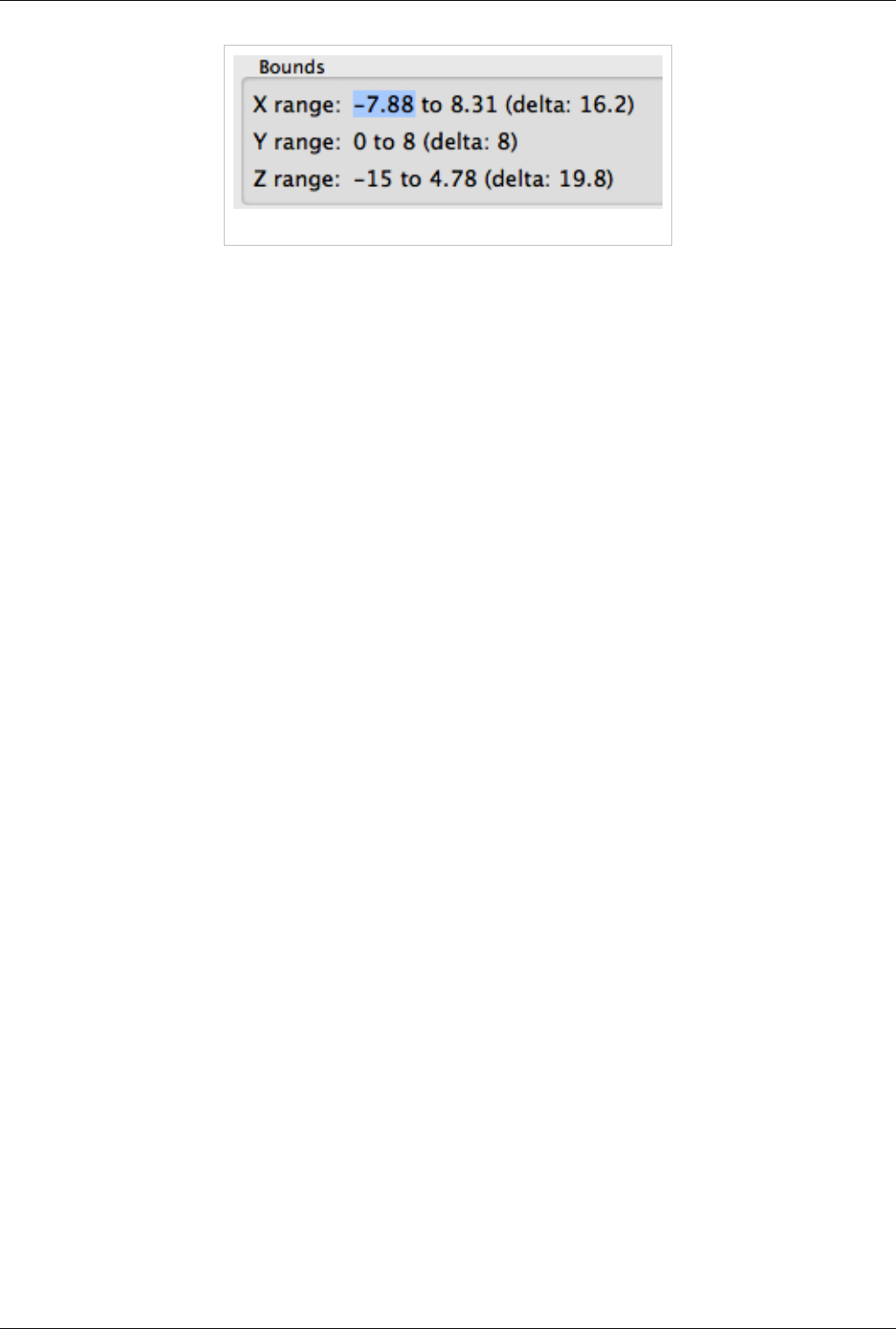

Bounds

Figure 3.15 Bounds information

The Bounds section will display the spatial bounds of the dataset. These are the coordinates of the smallest

axis-aligned hexahedron that contains the dataset as well as its dimensions, as in Figure 3.15.

Information Panel 25

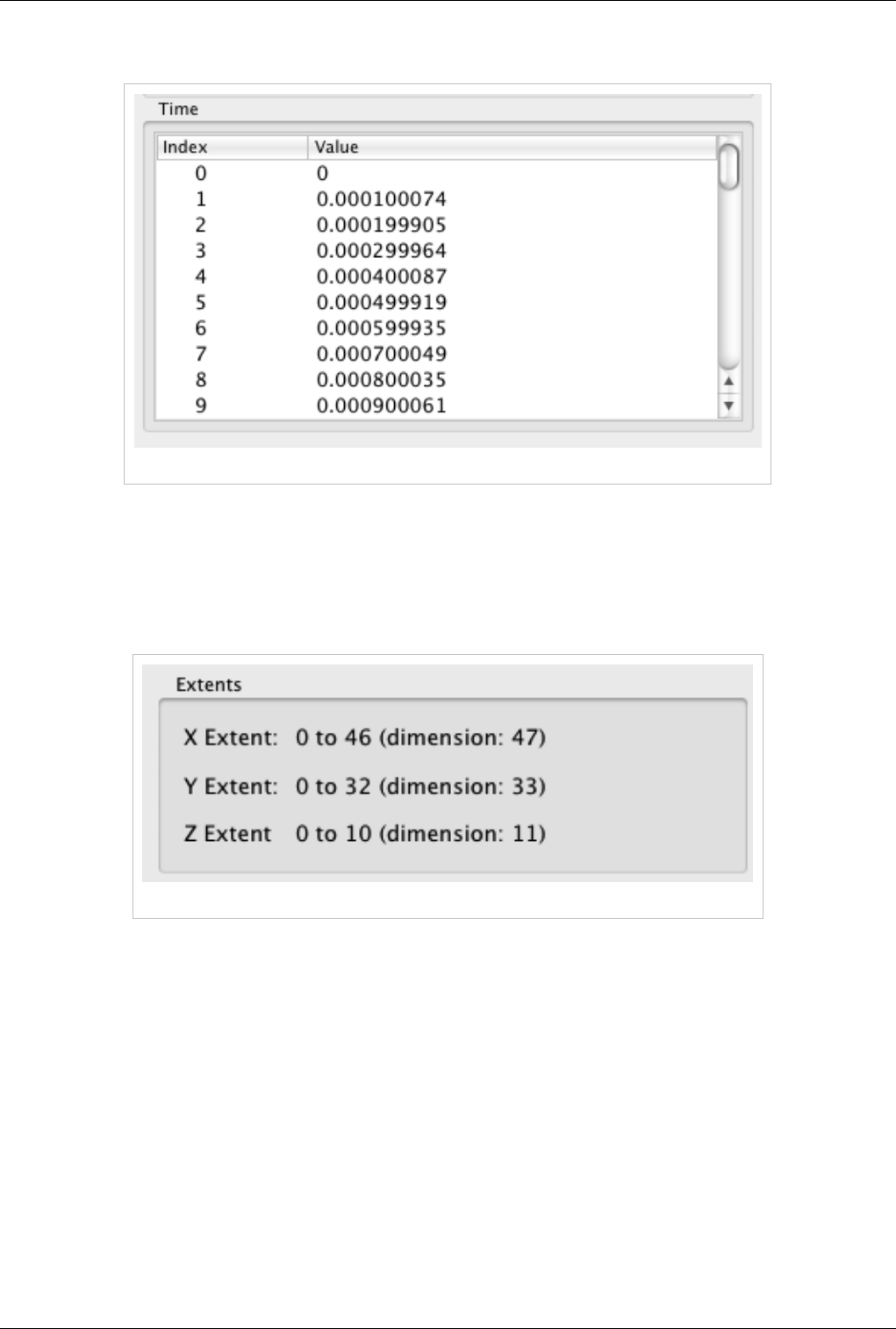

Timesteps

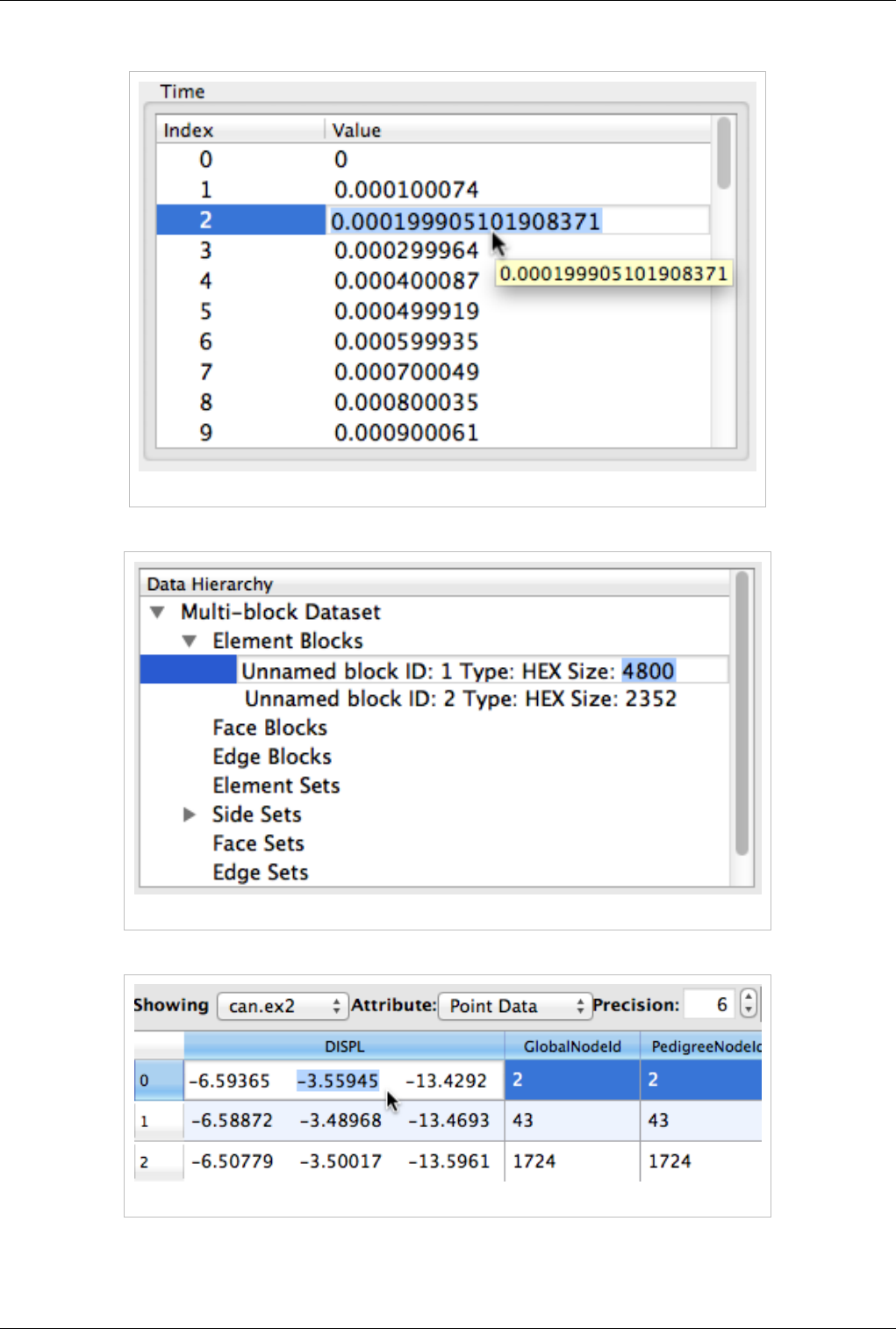

Figure 3.16 Time section showing timesteps

The Time section (see Figure 3.16) shows the index and value of all time steps available in a file or produceable by a

source. Note that this section display values only when a reader or source is selected even though filters downstream

of such sources also have time varying outputs. Also note that usually only one time step is loaded at a time.

Extents

Figure 3.17 Extents

The Extents section, seen in Figure 3.17, is available only for structured datasets (uniform rectilinear grid, rectilinear

grid and curvilinear grid). It displays the extent of all three indices that define a structured datasets. It also displays

the dimensions (the number of points) in each direction. Note that these refer to logical extents and the labels X

Extent, Y Extent and Z Extent can be somehow misleading for curvilinear grids.

Information Panel 26

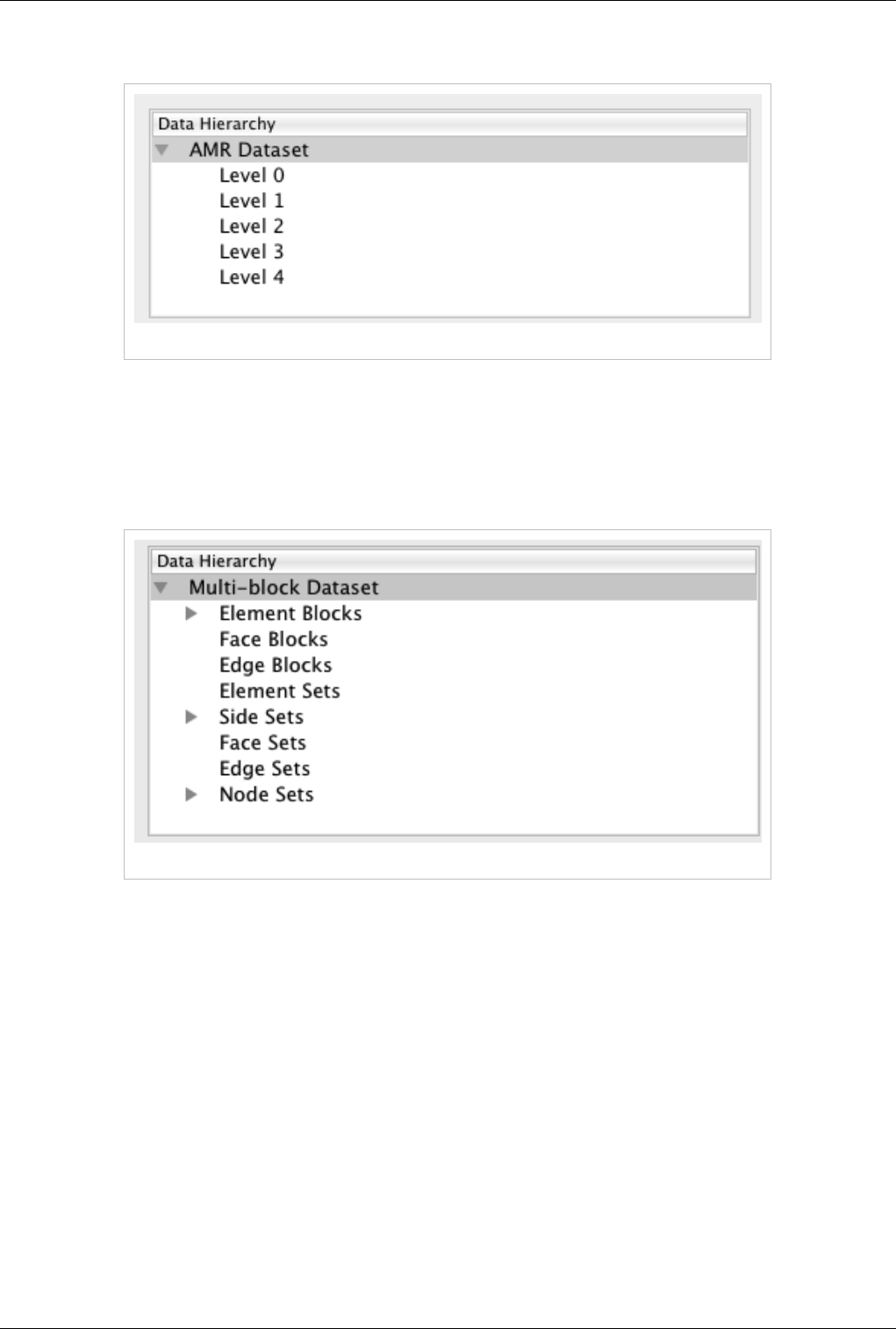

Data Hierarchy (AMR)

Figure 3.18 Data hierarchy for AMR

For AMR datasets, the Data Hierarchy section, Figure 3.18, shows the various refinement levels available in the

dataset. Note that you can drill down to each level by clicking on it. All of the other sections will immediately update

for the selected level. For information on the whole dataset, select the top parent called "AMR Dataset."

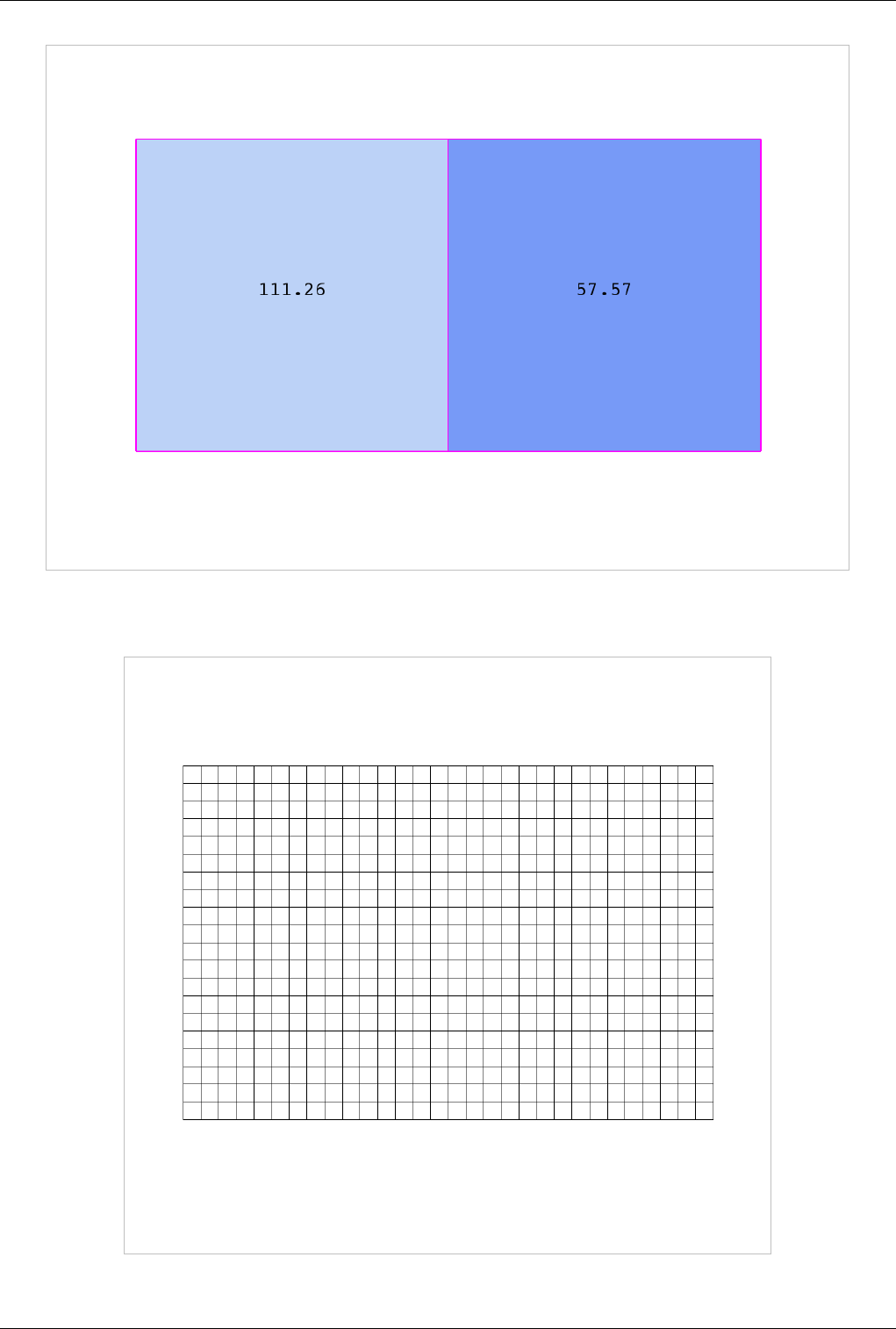

Data Hierarchy (Multi-Block Dataset)

Figure 3.19 Data hierarchy for multi-block datasets

For multi-block datasets, the Data Hierarchy section shows the tree that forms the multi-block dataset. By default,

only the first level children are shown. You can drill down further by clicking on the small triangle to the left of each

node. Note that you can drill down to each block by clicking on it. All of the other sections will immediately update

for the selected block. For information on the whole dataset, select the top parent called "Multi-Block Dataset".

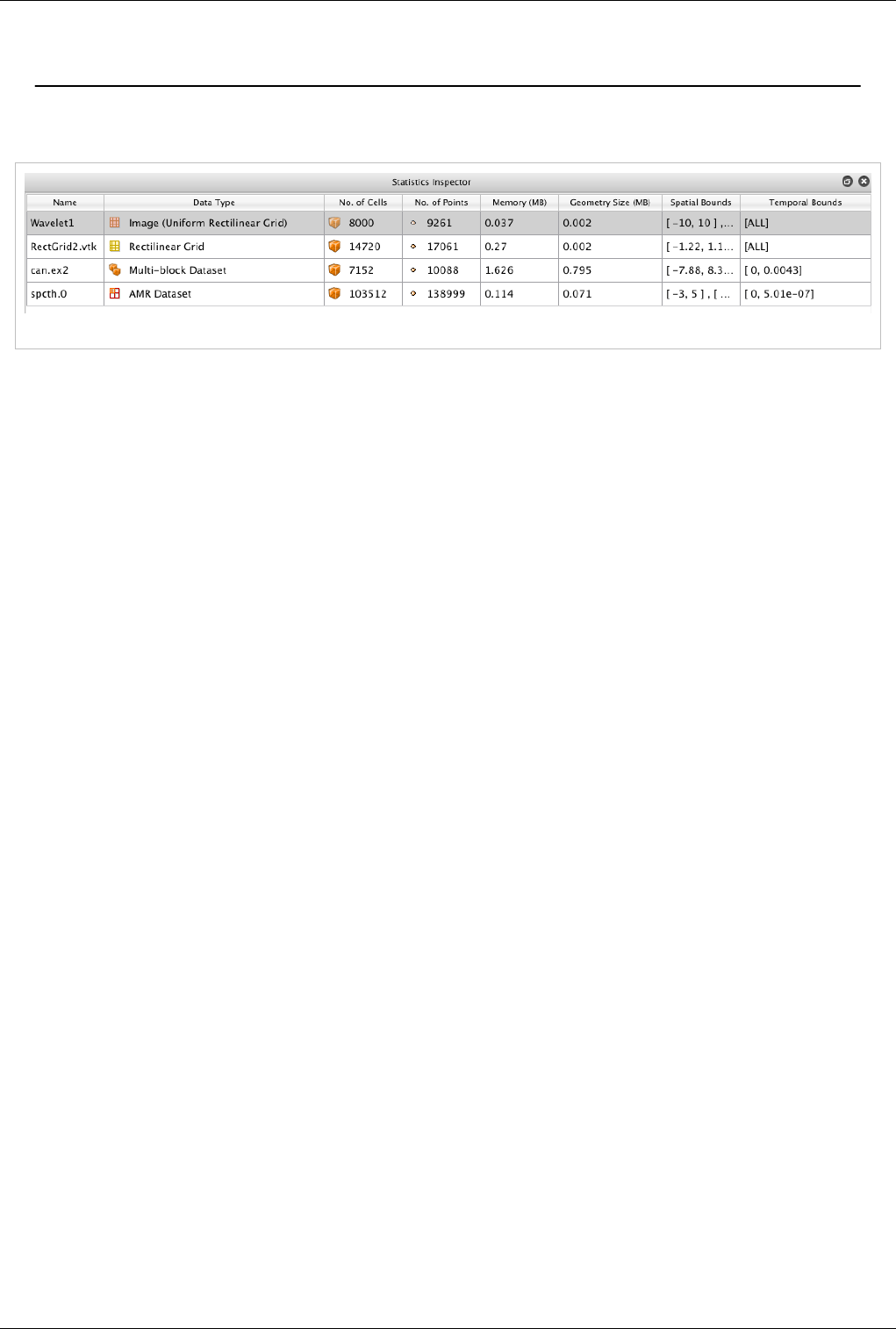

Statistics Inspector 27

Statistics Inspector

Statistics Inspector

Figure 3.20 The Statistics Inspector

The Statistics Inspector (View| Statistics Inspector) can be used to obtain high-level information about the data

produced by all sources, readers and filters in the ParaView pipeline. Some of this information is also available

through the Information panel. The information presented in the Statistics Inspector include the name of the pipeline

object that produced the data, the data type, the number of cells and points, memory used by the dataset, memory

used by the visual representation of the dataset (usually polygonal data), and the spatial bounds of the dataset (the

minimum and maximum time values for all available time steps).

Note that the selection in the Statistics Inspector is linked with the Pipeline Browser. Selecting an entry in the

Selection Inspector will update the Pipeline Browser and vice versa.

The Statics Inspector shows memory needed/used by every pipeline filter or source. However, it must be noted that

the actual memory used may still not align with this information due to the following caveats:

1. Shallow Copied Data: Several filters, such as Calculator, Shrink etc. that don't change the topology often pass

the attribute arrays without copying any of the heavy data (known as shallow copying). In that case though the

Statics Inspector will overestimate the memory used.

2. Memory for Data Datastructures: All data in VTK/ParaView is maintained in data-structures i.e.

vtkDataObject subclasses. Any data-structure requires memory. Generally, this memory needed is considerably

small compared to the heavy data i.e. the memory needed to save the topology, geometry, attribute arrays, etc.,

however in case of composite datasets and especially, AMR datasets with very large number of blocks in the

order of 100K blocks, the memory used for the meta-data starts growing and can no longer be ignored. The

Statistics Inspector as well as the Information Tab does not take this memory needed for datastructures into

consideration and hence in such cases underestimates the memory needed.

ParaView 3.14 adds "Memory Inspector" widget for users to directly inspect the memory used on all the ParaView

processes.

Memory Inspector 28

Memory Inspector

Memory Inspector

The ParaView Memory Inspector Panel provides users a convenient way to monitor ParaView's memory usage

during interactive visualization, and developers a point-and-click interface for attaching a debugger to local or

remote client and server processes. As explained earlier, both the Information panel, and the Statistics inspector are

prone to over and under estimate the total memory used for the current pipeline. The Memory Inspector addresses

those issues through direct queries to the operating system. A number of diagnostic statistics are gathered and

reported. For example, the total memory used by all processes on a per-host basis, the total cumulative memory use

by ParaView on a per-host basis, and the individual per-rank use by each ParaView process are reported. When

memory consumption reaches a critical level, either the cumulatively on the host or in an individual rank, the

corresponding GUI element will turn red alerting the user that they are in danger of potentially being shut down.

This potentially gives them a chance to save state and restart the job with more nodes avoiding loosing their work.

On the flip side, knowing when you're not close to using the full capacity of available memory can be useful to

conserver computational resources by running smaller jobs. Of course the memory foot print is only one factor in

determining the optimal run size.

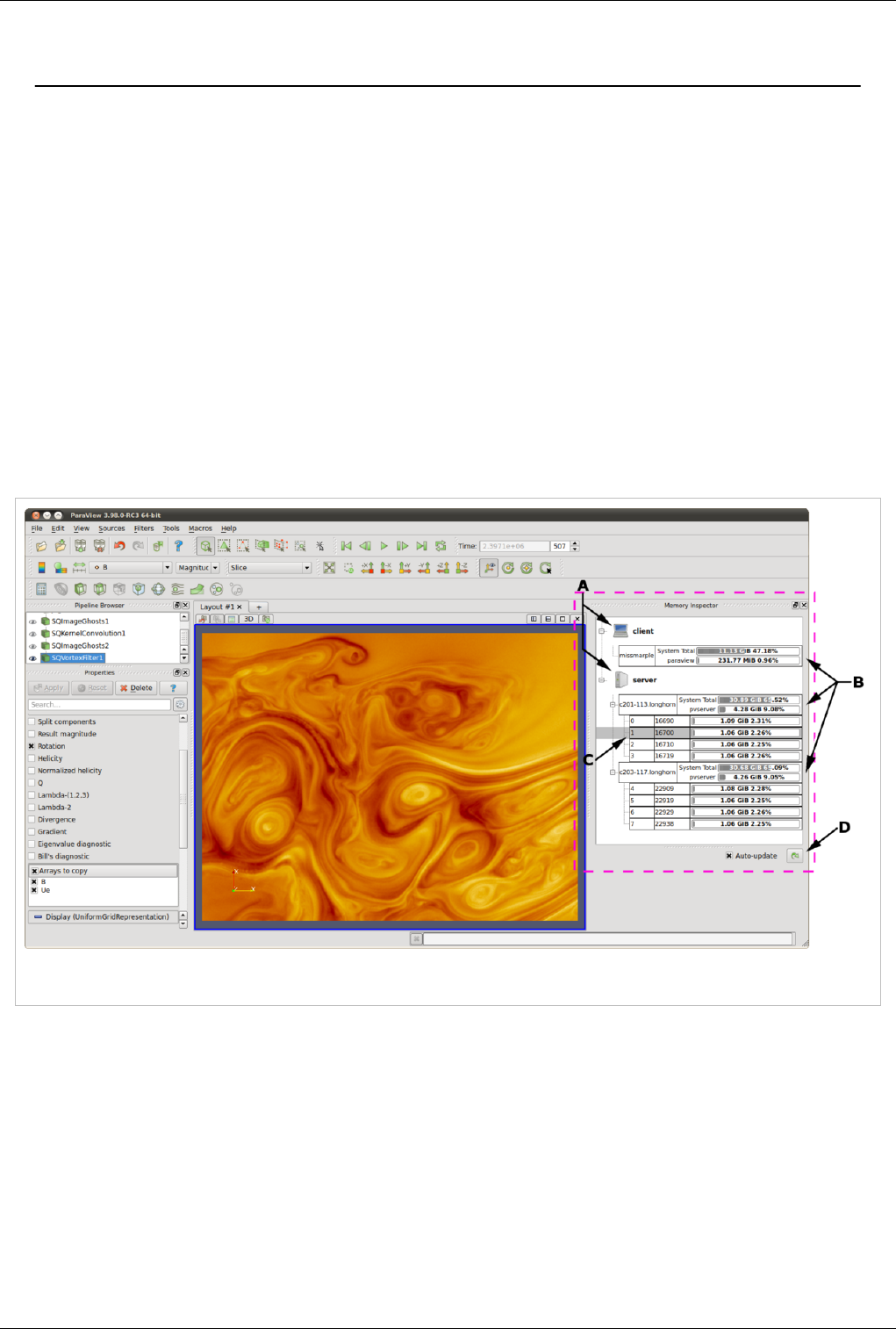

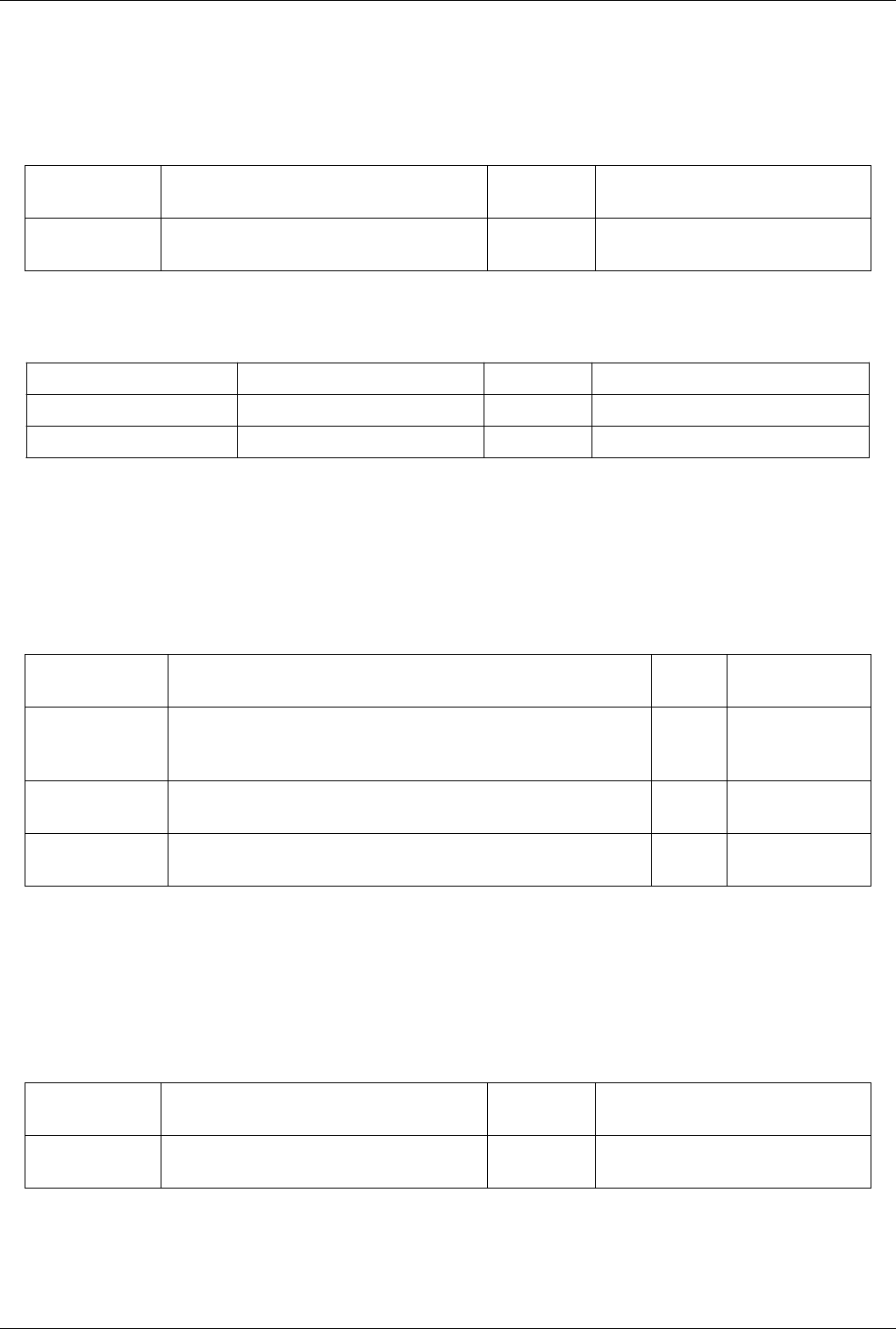

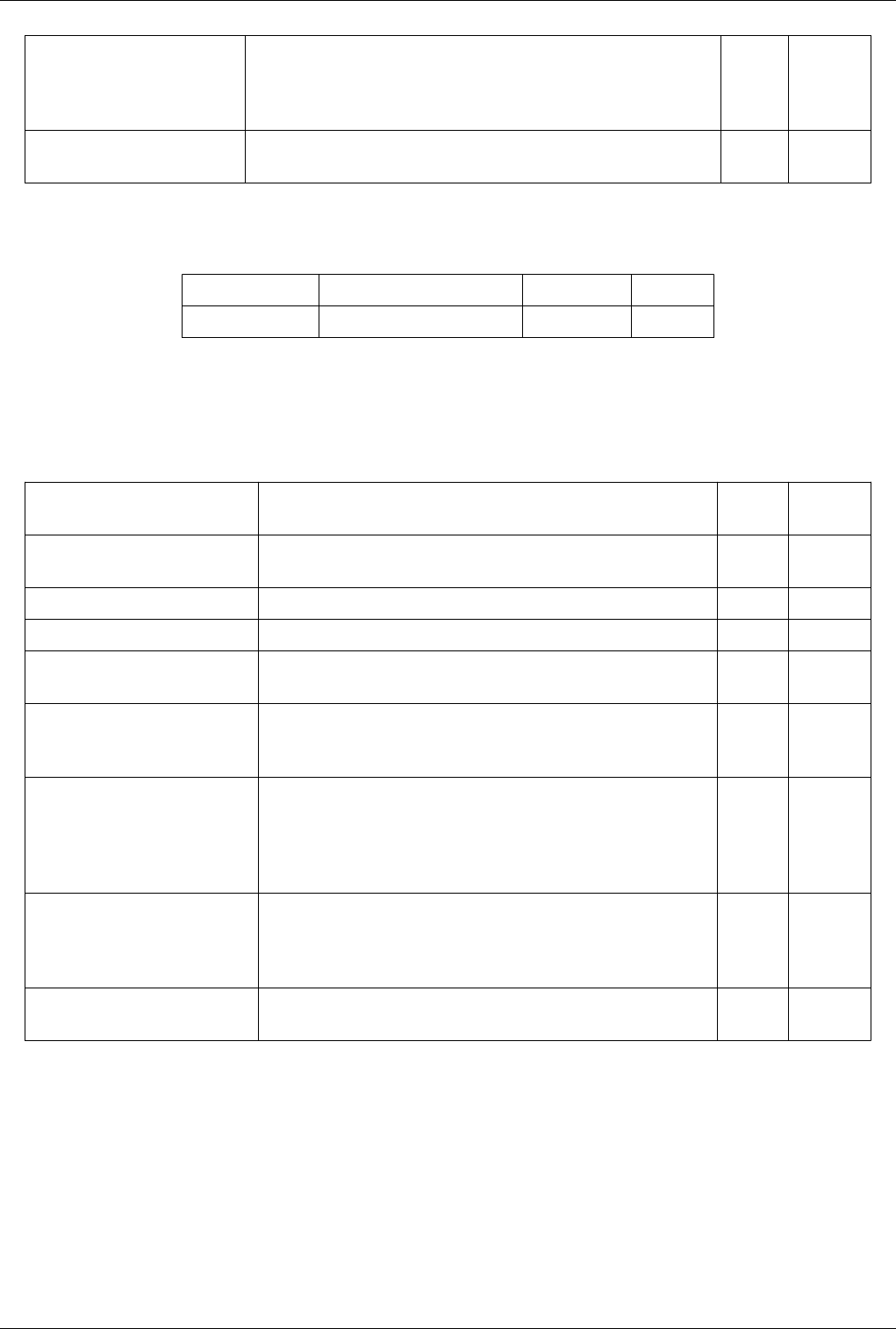

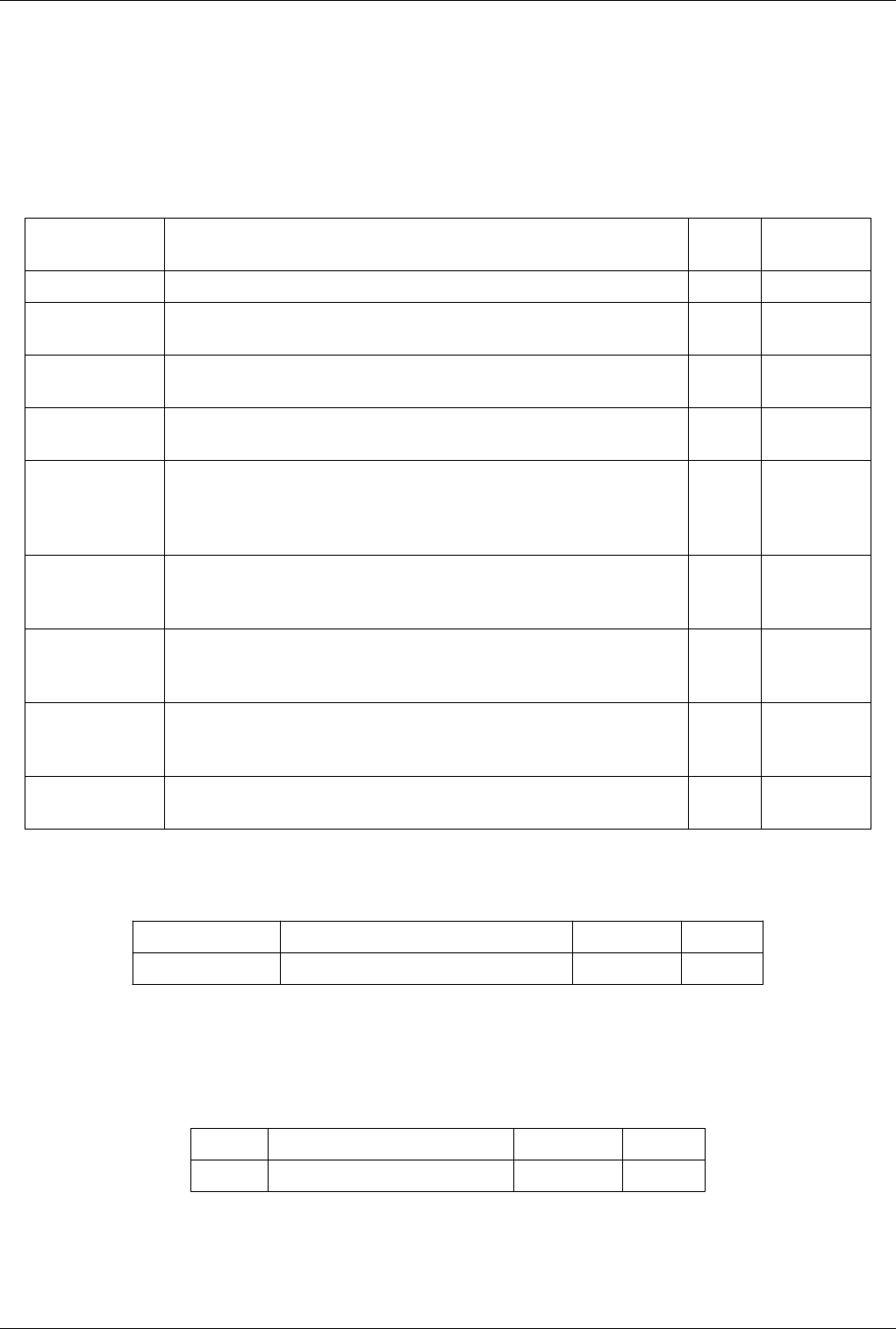

Figure The main UI elements of the memory inspector panel. A: Process Groups, B: Per-Host statistics, C: Per-Rank statistics, and D: Update

controls.

Memory Inspector 29

User Interface and Layout

The memory inspector panel displays information about the current memory usage on the client and server hosts.

Figure 1 shows the main UI elements labeled A-D. A number of additional features are provided via specialized

context menus accessible from the Client and Server group's, Host's, and Rank's UI elements. The main UI elements

are:

A. Process Groups

Client

There is always a client group which reports statistics about the ParaView client.

Server

When running in client-server mode a server group reports statistics about the hosts where pvserver

processes are running.

Data Sever

When running in client-data-render server mode a data server group reports statistics about the hosts

where pvdataserver processes are running.

Render Sever

When running in client-data-render server mode a render server group reports statistics about the hosts

where pvrenderserver processes are running.

B. Per-Host Statistics

Per-host statics are reported for each host where a ParaView process is running. Hosts are organized by host

name which is shown in the first column. Two statics are reported: 1) total memory used by all processes on

the host, and 2) ParaView's cumulative usage on this host. The absolute value is printed in a bar that shows the

percentage of the total available used. On systems where job-wide resource limits are enforced, ParaView is

made aware of the limits via the PV_HOST_MEMORY_LIMIT environment variable in which case,

ParaView's cumulative percent used is computed using the smaller of the host total and resource limit.

C. Per-Rank Statistics

Per-rank statistics are reported for each rank on each host. Ranks are organized by MPI rank number and

process id, which are shown in the first and second columns. Each rank's individual memory usage is reported

as a percentage used of the total available to it. On systems where either job-wide or per process resource

limits are enforced, ParaView is made aware of the limits via the PV_PROC_MEMORY_LIMIT

environment variable or through standard usage of Unix resource limits. The ParaView rank's percent used is

computed using the smaller of the host total, job-wide, or Unix resource limits.

D. Update Controls

By default, when the panel is visible, memory use statistics are updated automatically as pipeline objects are

created, modified, or destroyed, and after the scene is rendered. Updates may be triggered manually by using

the refresh button. Automatic updates may be disabled by un-checking the Auto-update check box. Queries to

remote system have proven to be very fast even for fairly large jobs , hence the auto-update feature is enabled

by default.

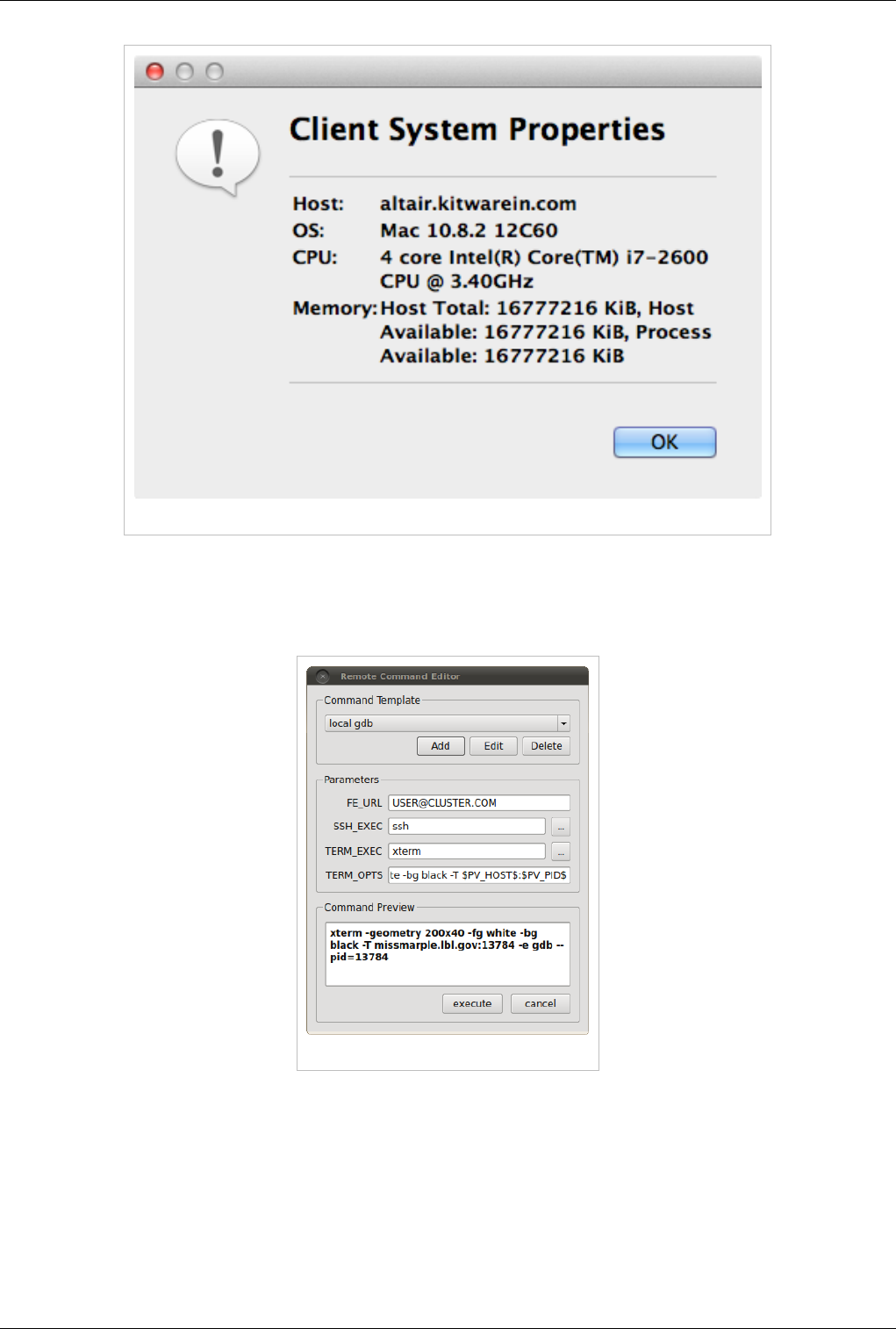

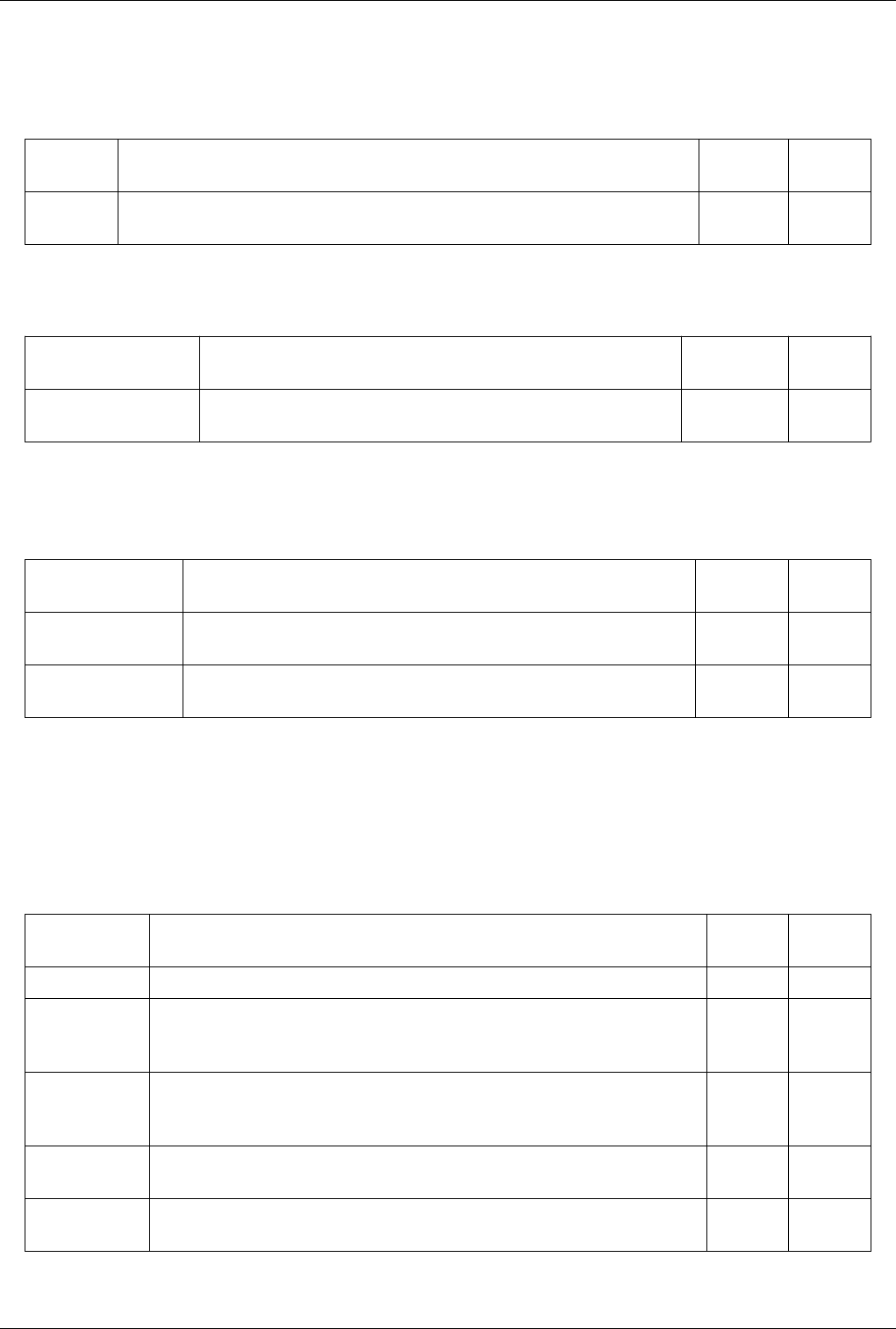

Host Properties Dialog

The Host context menu provides a Host properties dialog which reports various system details such as the OS

version, CPU version, and memory installed and available to the the host context and process context. While,

the Memory Inspector panel reports memory use as a percent of the available in the given context, the host

properties dialog reports the total installed and available in each context. Comparing the installed and available

memory can be used to determine if you are impacted by resource limits.

Memory Inspector 30

Figure: Host properties dialog

Advanced Debugging Features

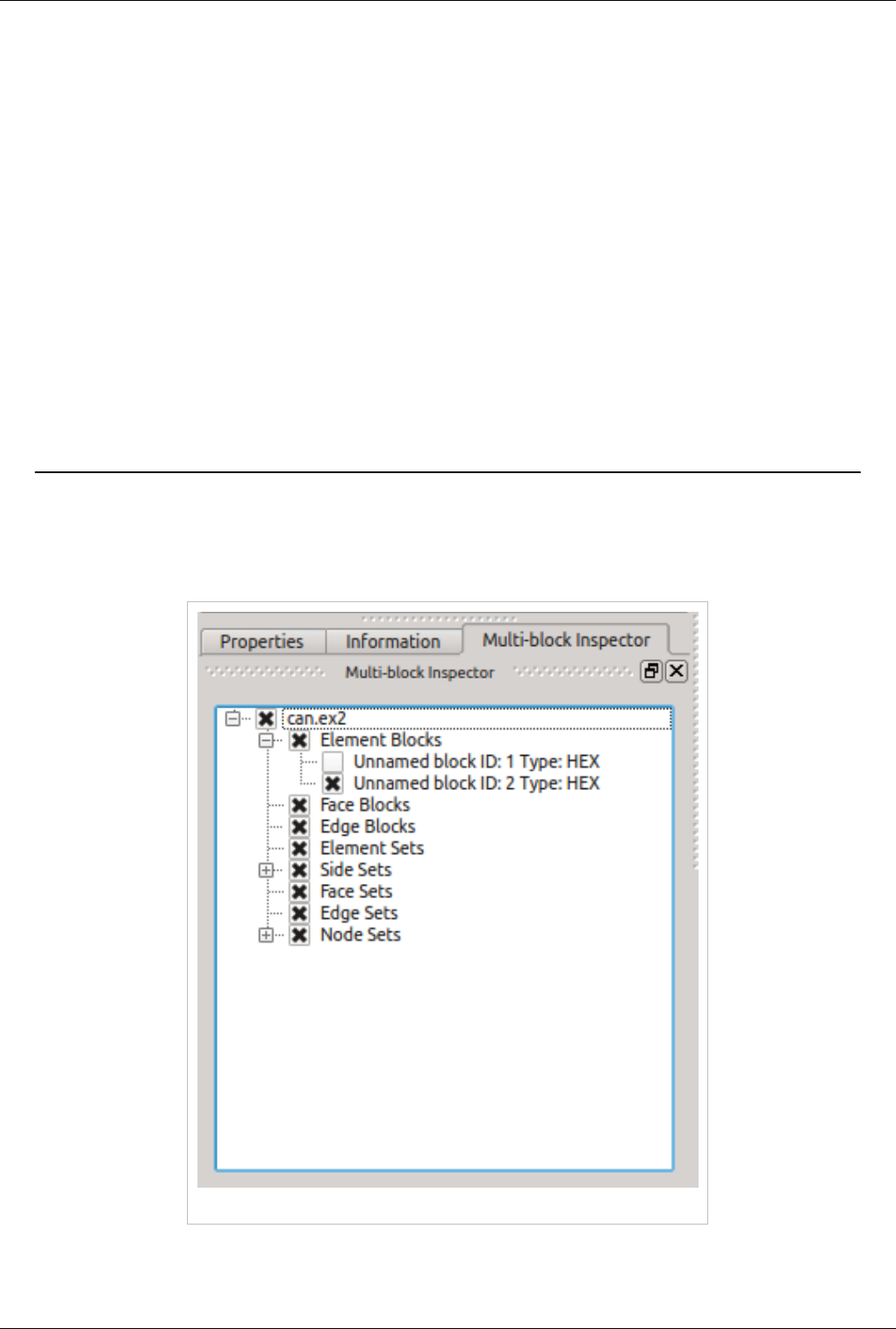

Remote Commands

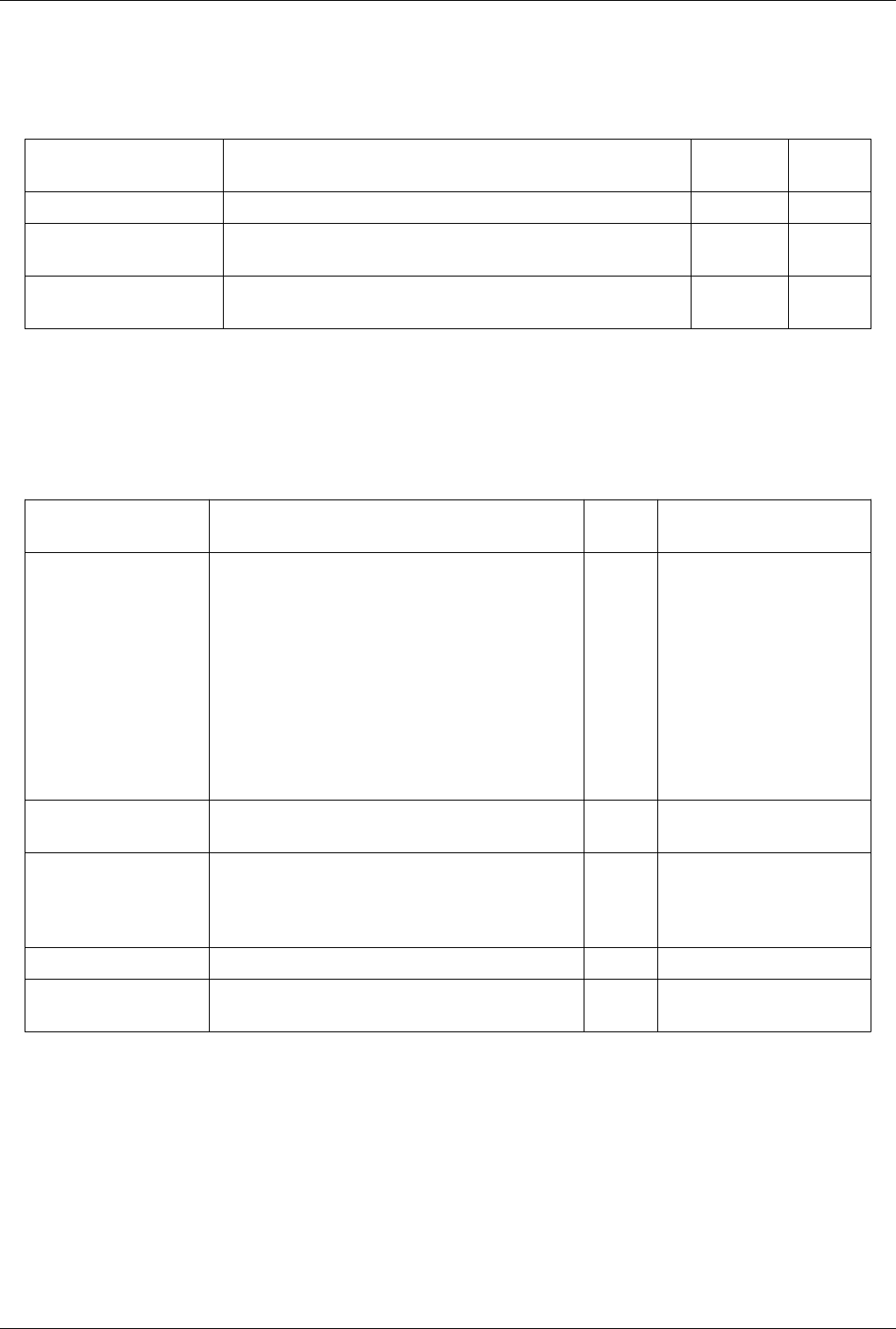

Figure The remote command dialog.

The Memory Inspector Panel provides a remote (or local) command feature allowing one to execute a shell

command on a given host. This feature is exposed via a specialized Rank item context menu. Because we have

information such as a rank's process id, individual processes may be targeted. For example this allows one to quickly

attach a debugger to a server process running on a remote cluster. If the target rank is not on the same host as the

client then the command is considered remote otherwise it is consider local. Therefor remote commands are executed

via ssh while local commands are not. A list of command templates is maintained. In addition to a number of

pre-defined command templates, users may add templates or edit existing ones. The default templates allow one to:

Memory Inspector 31

• attach gdb to the selected process

• run top on the host of the selected process

• send a signal to the selected process

Prior to execution, the selected template is parsed and a list of special tokens are replaced with runtime determined

or user provide values. User provided values can be set and modified in the dialog's parameter group. The command,

with tokens replaced, is shown for verification in the dialog's preview pane.

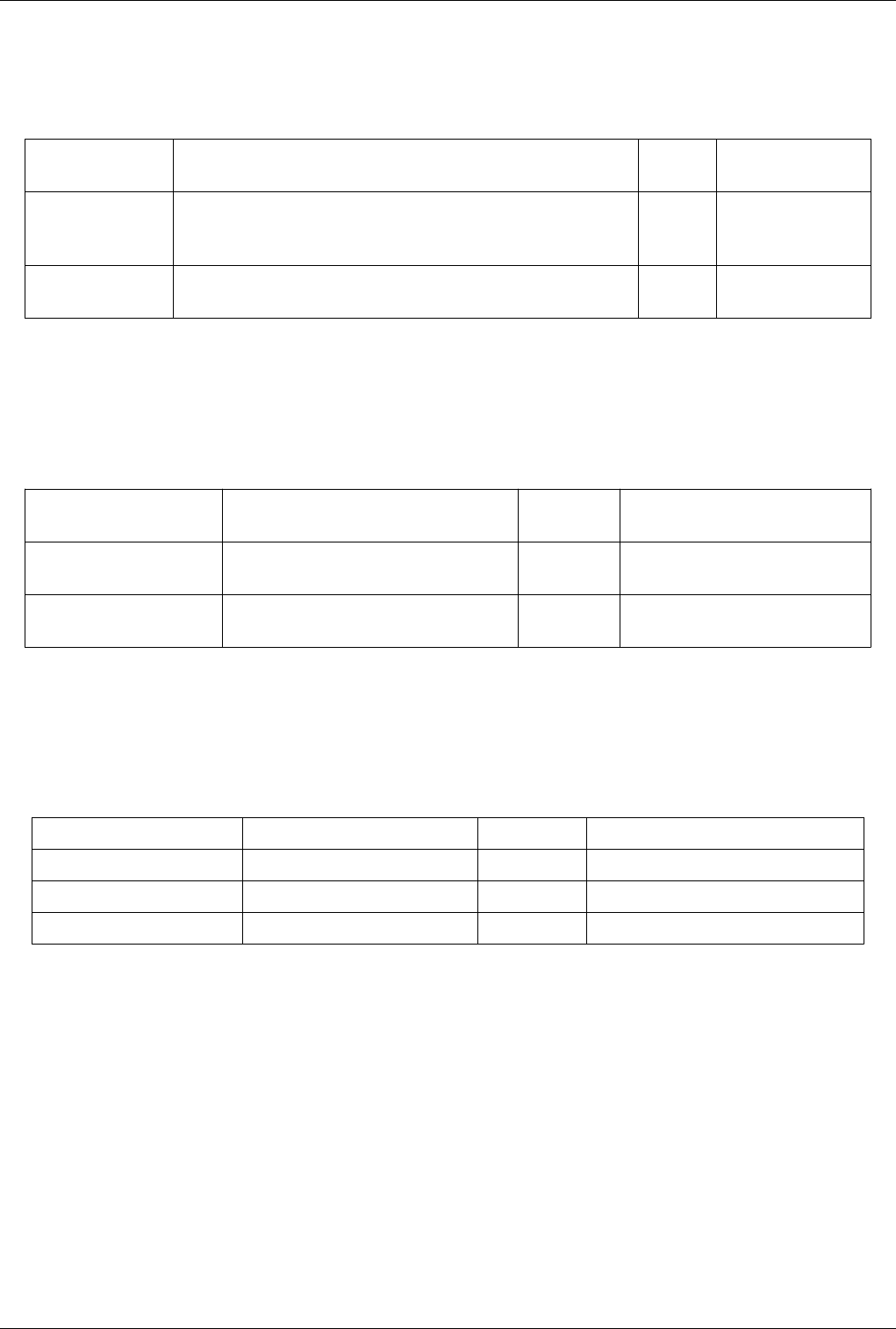

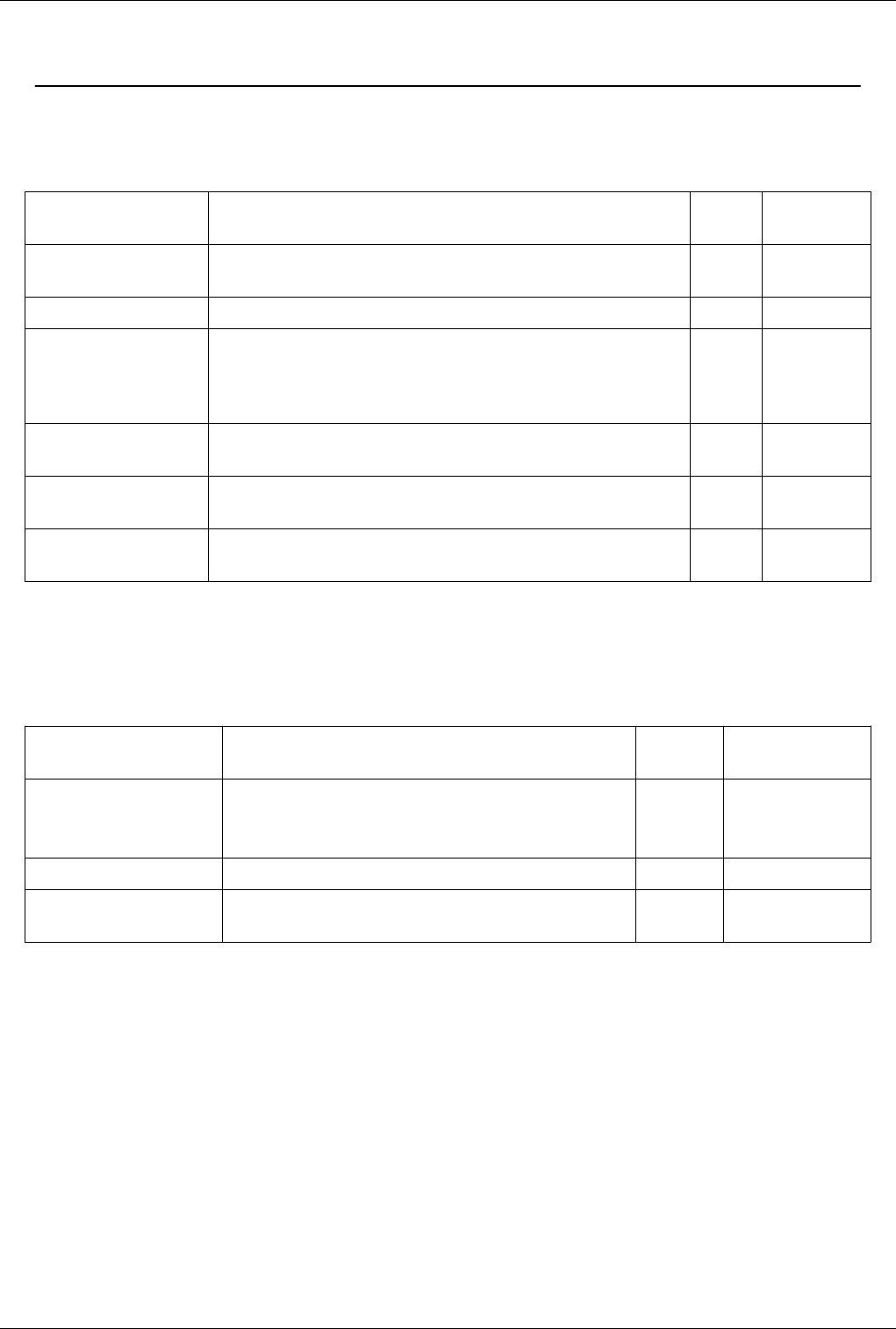

The following tokens are available and may be used in command templates as needed:

$TERM_EXEC$

The terminal program which will be used execute commands. On Unix systems typically xterm is used, while

on Windows systems typically cmd.exe is used. If the program is not in the default path then the full path must

be specified.

$TERM_OPTS$

Command line arguments for the terminal program. On Unix these may be used to set the terminals window

title, size, colors, and so on.

$SSH_EXEC$

The program to use to execute remote commands. On Unix this is typically ssh, while on Windows one option

is plink.exe. If the program is not in the default path then the full path must be specified.

$FE_URL$

Ssh URL to use when the remote processes are on compute nodes that are not visible to the outside world. This

token is used to construct command templates where two ssh hops are made to execute the command.

$PV_HOST$

The hostname where the selected process is running.

$PV_PID$

The process-id of the selected process.

Note: On Window's the debugging tools found in Microsoft's SDK need to be installed in addition to Visual Studio

(eg. windbg.exe). The ssh program plink.exe for Window's doesn't parse ANSI escape codes that are used by Unix

shell programs. In general the Window's specific templates need some polishing.

Stack Trace Signal Handler

The Process Group's context menu provides a back trace signal handler option. When enabled, a signal handler is

installed that will catch signals such as SEGV, TERM, INT, and ABORT and print a stack trace before the process

exits. Once the signal handler is enabled one may trigger a stack trace by explicitly sending a signal. The stack trace

signal handler can be used to collect information about crashes, or to trigger a stack trace during deadlocks, when it's

not possible to ssh into compute nodes. Often sites that restrict users ssh access to compute nodes often provide a

way to signal a running processes from the login node. Note, that this feature is only available on systems the

provide support for POSIX signals, and currently we only have implemented stack-trace for GNU compatible

compilers.

Memory Inspector 32

Compilation and Installation Considerations

If the system on which ParaView will run has special resource limits enforced, such as job-wide memory use limits,

or non-standard per-process memory limits, then the system administrators need to provide this information to the

running instances of ParaView via the following environment variables. For example those could be set in the batch

system launch scripts.

PV_HOST_MEMORY_LIMIT

for reporting host-wide resource limits

PV_PROC_MEMORY_LIMIT

for reporting per-process memory limits that are not enforced via standard Unix resource limits.

A few of the debugging features (such as printing a stack trace) require debug symbols. These features will work

best when ParaView is built with CMAKE_BUILD_TYPE=Debug or for release builds

CMAKE_BUILD_TYPE=RelWithDebugSymbols.

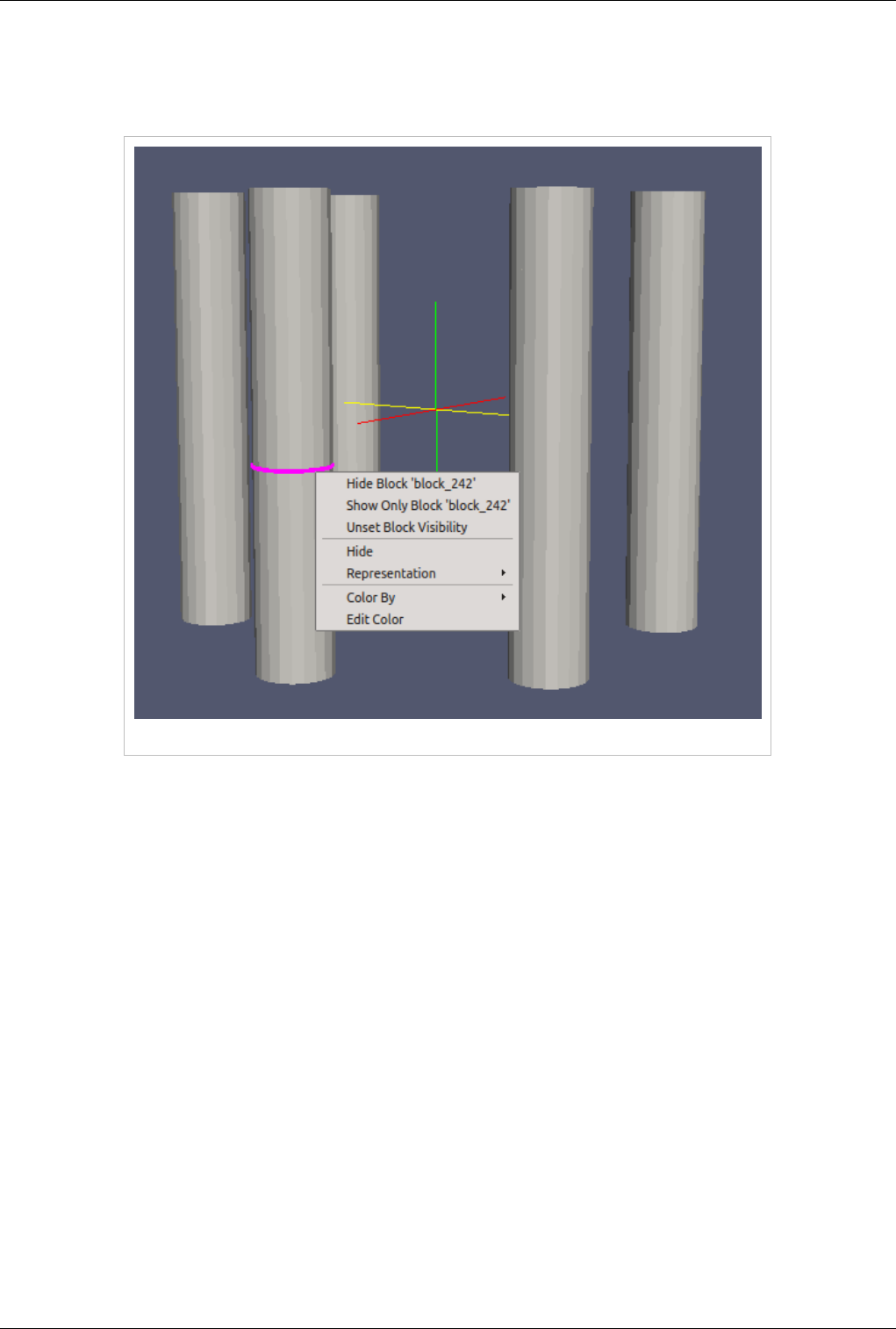

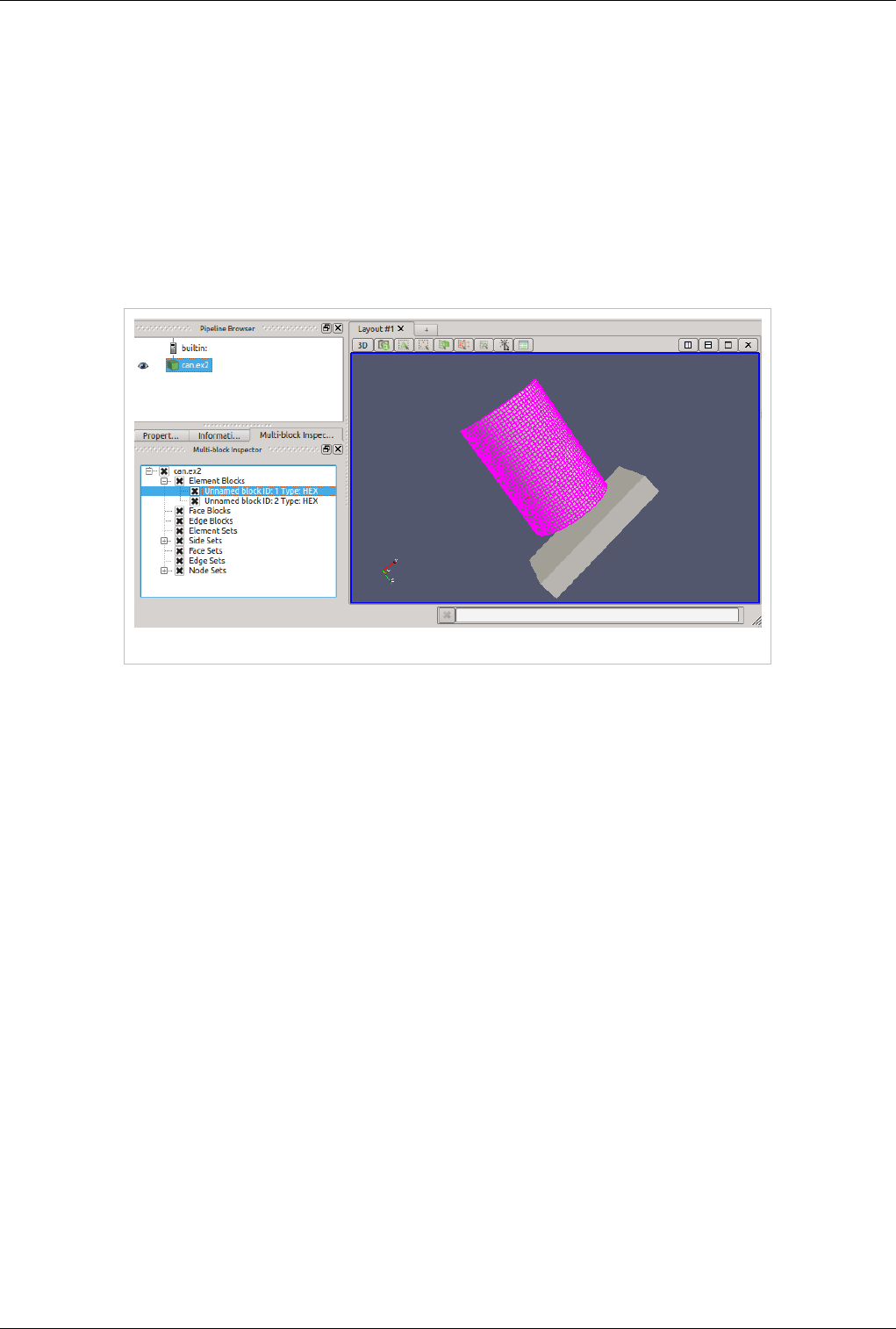

Multi-block Inspector

Introduction

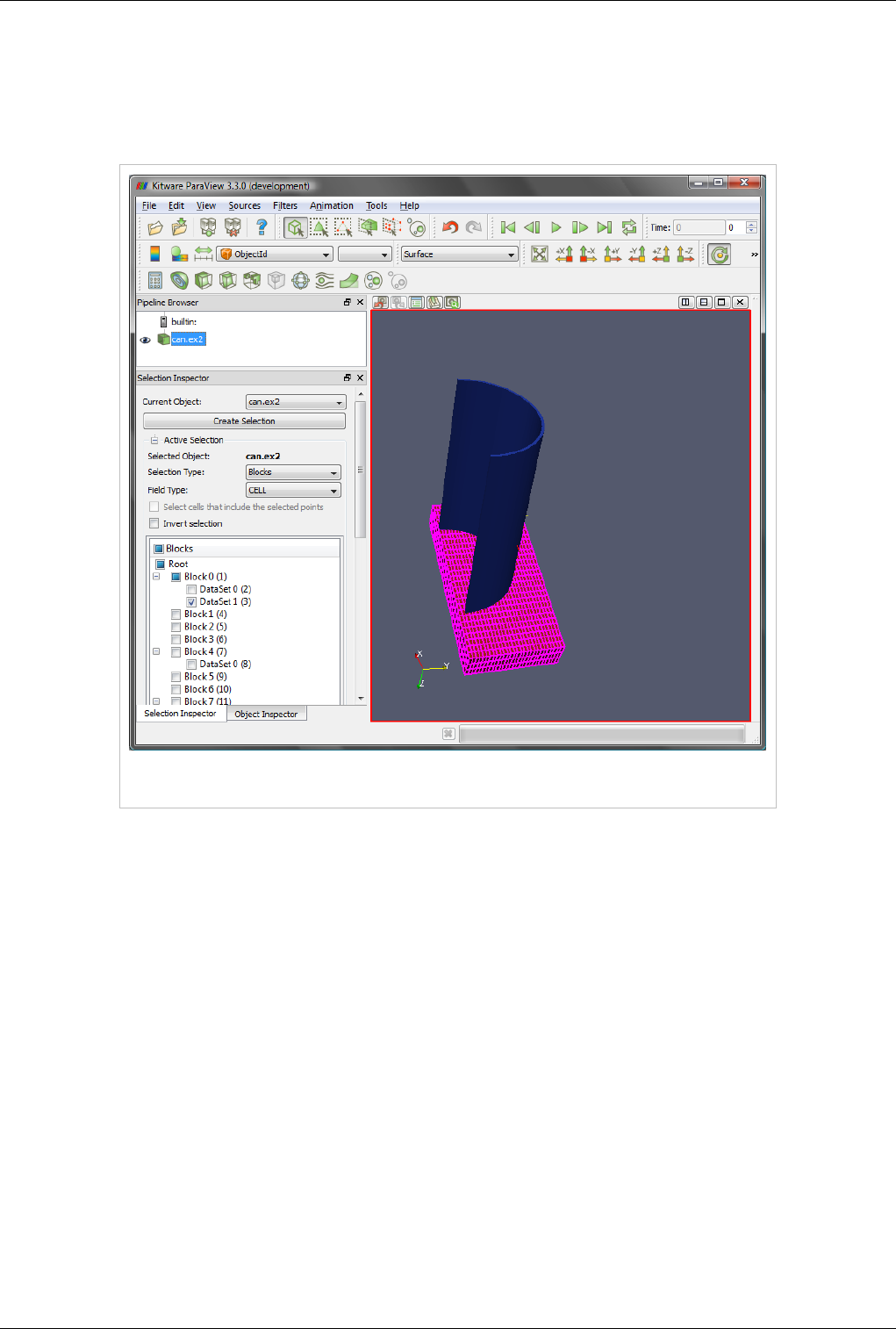

The Multi-Block Inspector panel allows users to change the rendering and display properties for individual blocks

within a multi-block data set.

Multi-block Inspector Panel

Multi-block Inspector 33

Block Visibility

The visibility of individual blocks can be changed by toggling the check box next to their name. By default, blocks

will inherit the visibility status of their parent block. Thus, changing the visibility of a non-leaf block will also

change the visibility of each child block.

Selection Linking

Selections made in the render-view will be linked with the items in the multi-block inspector and visa versa. Using

block selection (keyboard shortcut: 'b') and clicking on a block will result in that block being highlighted in the tree

view.

Block Selection Linking

35

Displaying Data

Views, Representations and Color Mapping

This chapter covers different mechanisms in ParaView for visualizing data. Through these visualizations, users are

able to gain unique insight on their data.

Understanding Views

Views

When the ParaView application starts up, you see a 3D viewport with an axes at the center. This is a view. In

ParaView, views are frames in which the data can be seen. There are different types of views. The default view that

shows up is a 3D view which shows rendering of the geometry extracted from the data or volumes or slices in a 3D

scene. You can change the default view in the Settings dialog (Edit | Settings (in case of Mac OS X, ParaView |

Preferences)).

Figure 4.1 ParaView view screen

There may be parameters that are available to the user that control how the data is displayed e.g. in case of 3D view,

the data can be displayed as wireframes or surfaces, where the user selects the color of the surface or uses a scalar for

coloring etc. All these options are known as Display properties and are accessible from the Display tab in the Object

Views, Representations and Color Mapping 36

Inspector.

Since there can be multiple datasets shown in a view, as well as multiple views, the Display tabs shows the

properties for the active pipeline object (changed by using the Pipeline Browser, for example) in the active view.

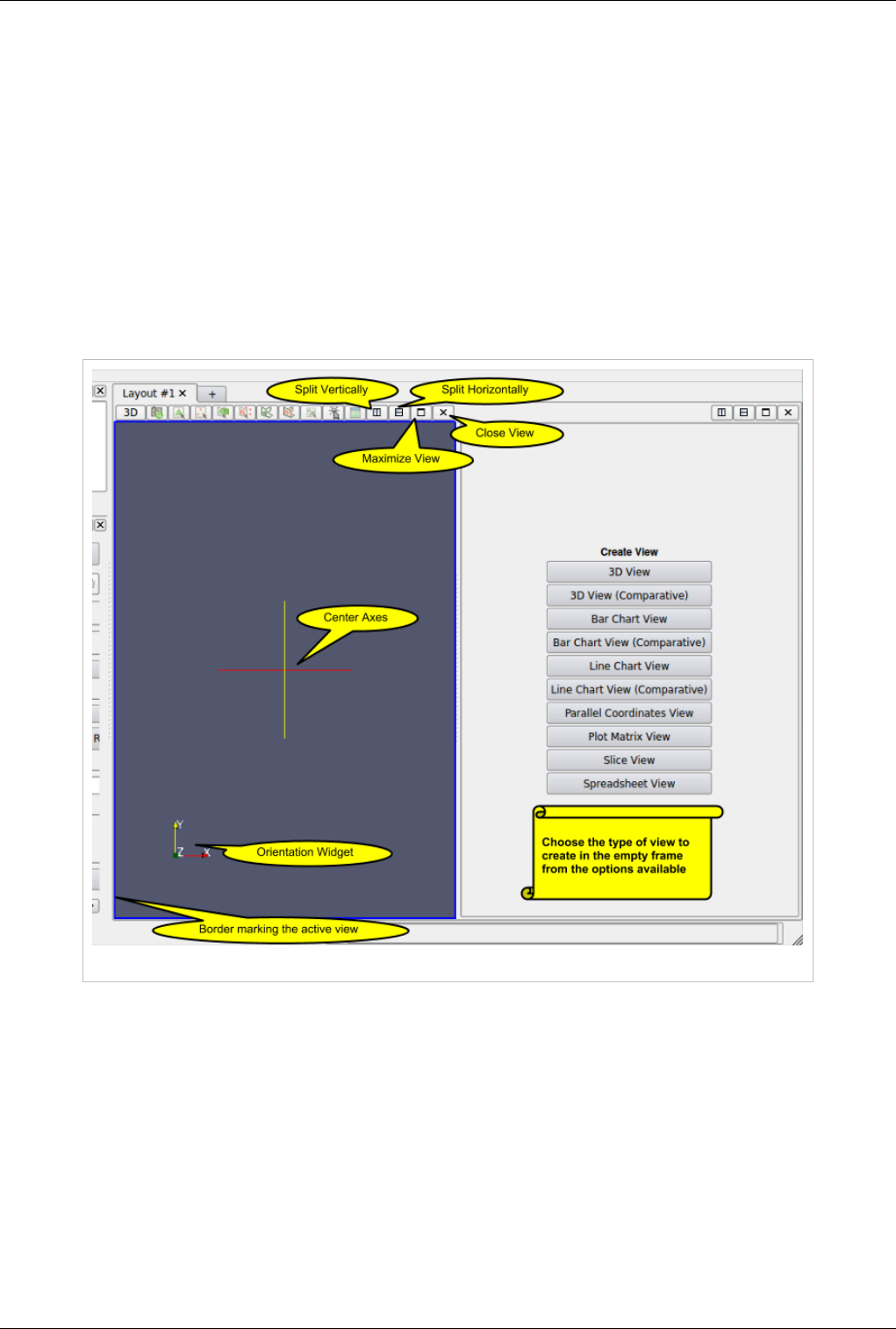

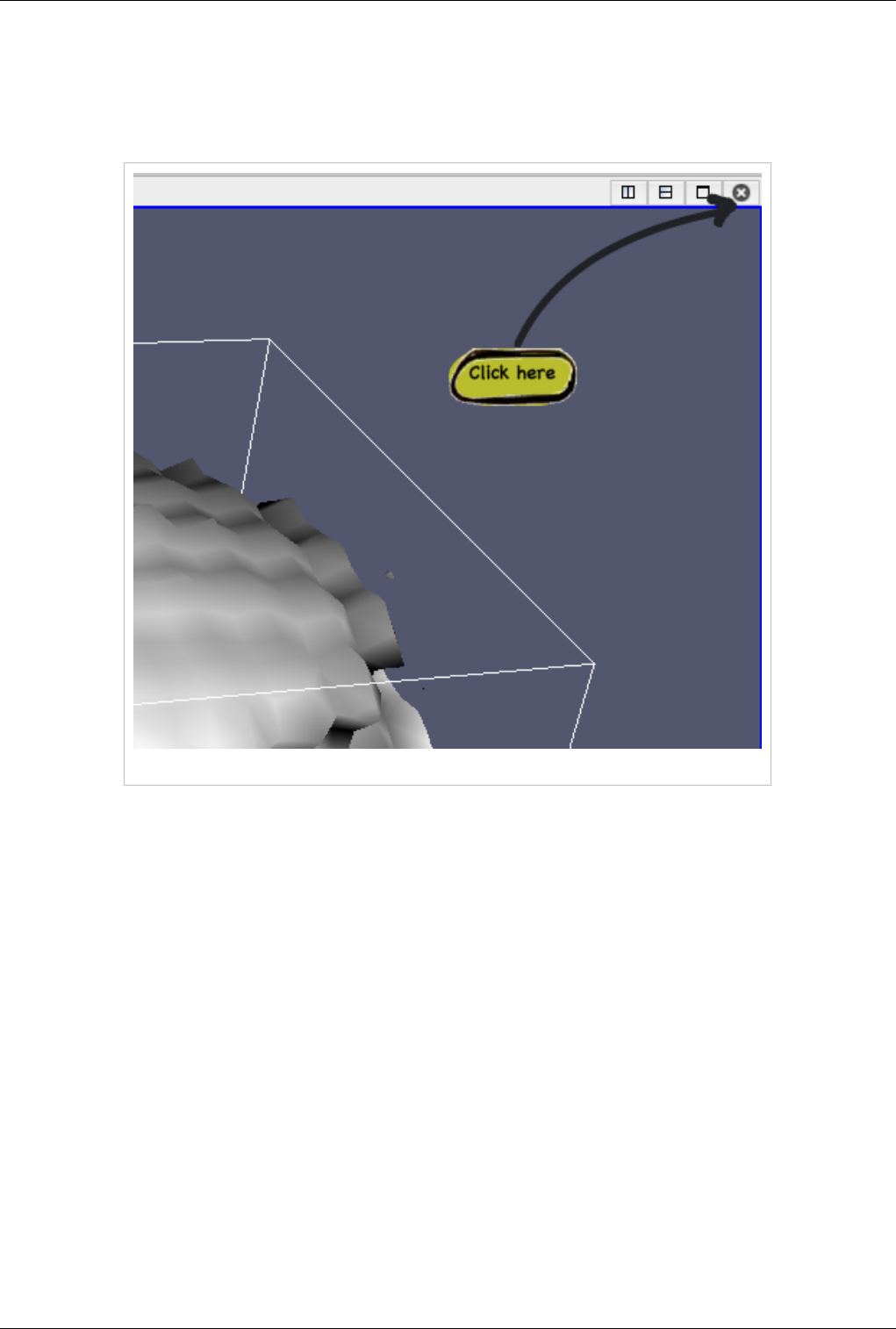

Multiple Views

ParaView supports showing multiple views side by side. To create multiple views, use the controls in the top right

corner of the view to split the frame vertically or horizontally. You can also maximize a particular view to

temporarily hide other views. Once a view-frame is split, you will see a list of buttons showing the different types of

views that you can create to place in that view. Simply click the button to create the view of your choice.

You can swap view position by dragging the title bar for a view frame and dropping it into the title bar for another

view.

Figure 4.2 View options in ParaView

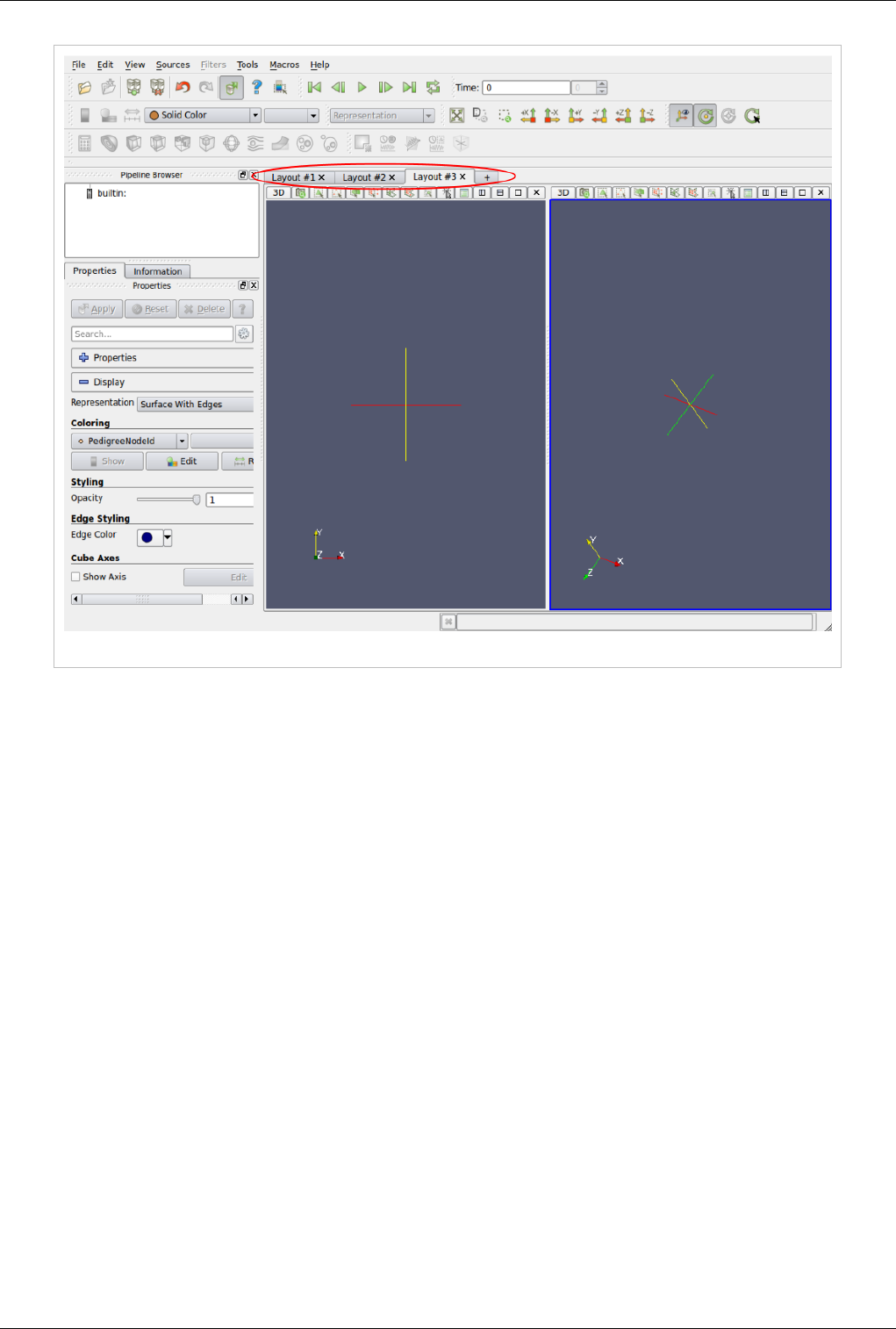

Starting with ParaView 3.14, users can create multiple tabs to hold a grid of views. When in tile-display mode, only

the active tab is shown on the tile-display. Thus, this can be used as a easy mechanism for switching views shown on

a tile display for presentations.

Views, Representations and Color Mapping 37

Figure 4.3 Multiple Tabs for laying out views in ParaView

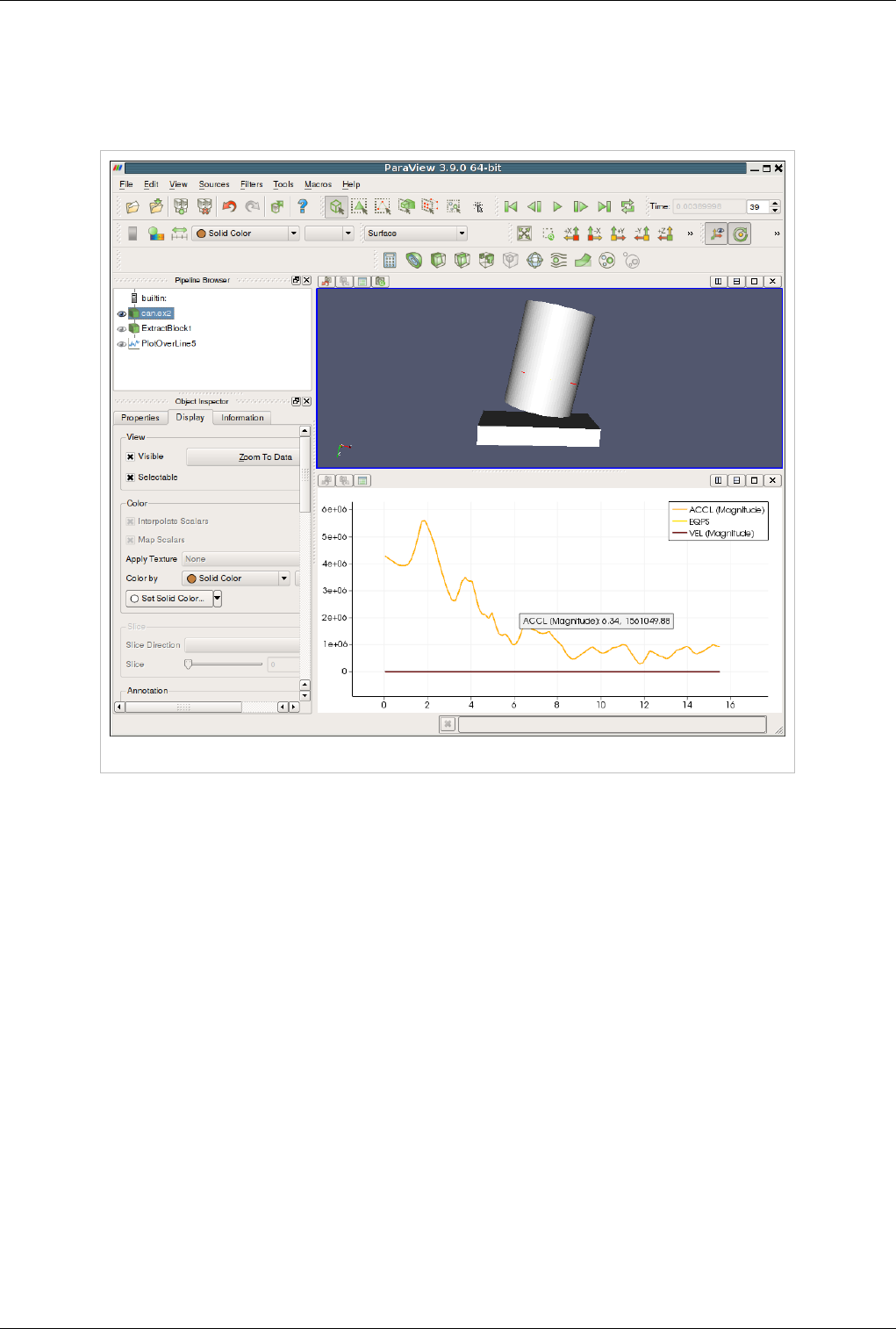

Some filters, such as Plot Over Line may automatically split the view frame and show the data in a particular type of

view suitable for the data generated by the filter.

Active View

Once you have multiple views, the active view is indicated by a colored border around the view frame. Several

menus as well as toolbar buttons affect the active view alone. Additionally, they may become enabled/disabled based

on whether that corresponding action is supported by the active view.

The Display tab affects the active view. Similarly, the eye icon in the Pipeline Browser, next to the pipeline objects,

indicates the visibility state for that object in the active view.

When a new filter, source or reader is created, if possible it will be displayed by default in the active view, otherwise,

if will create a new view.

Views, Representations and Color Mapping 38

Types of Views

This section covers the different types of views available in ParaView. For each view, we will talk about the controls

available to change the view parameters using View Settings as well as the parameters associated with the Display

Tab for showing data in that view.

3D View

3D view is used to show the surface or volume rendering for the data in a 3D world. This is the most commonly used

view type.

When running in client-server mode, 3D view can render data either by bringing the geometry to the client and then

rendering it there or by rendering it on the server (possibly in parallel) and then delivering the composited images to

the client. Refer to the Client-Server Visualization chapter for details.

This view can also be used to visualize 2D dataset by switching its interaction mode to the 2D mode. This can be

achieved by clicking on the button labelled "3D" in the view local toolbar. The label will automatically turn to 2D

and the 2D interaction will be used as well as parallel projection.

Interaction

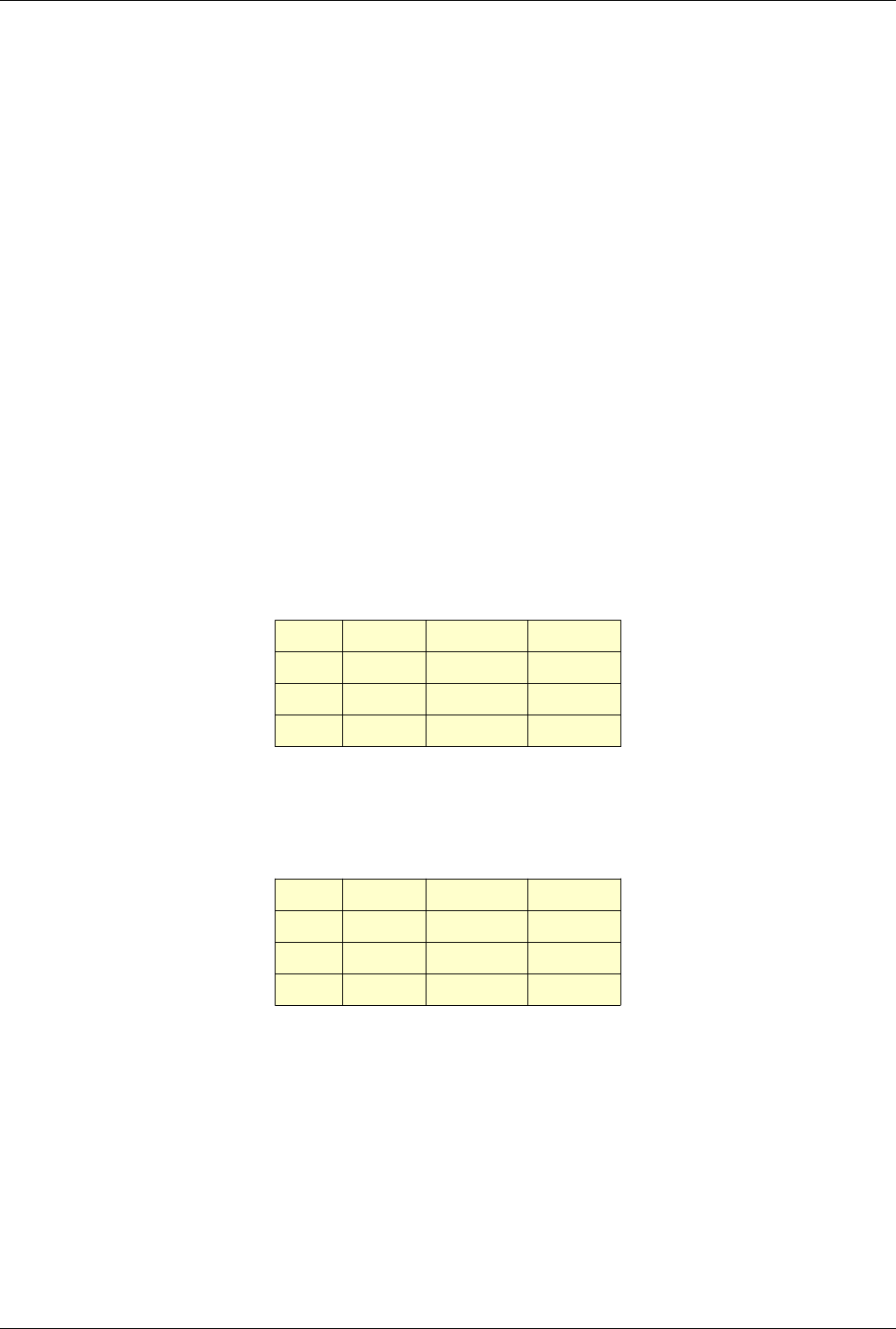

Interacting with the 3D view will typically update the camera. This makes it possible to explore the visualization

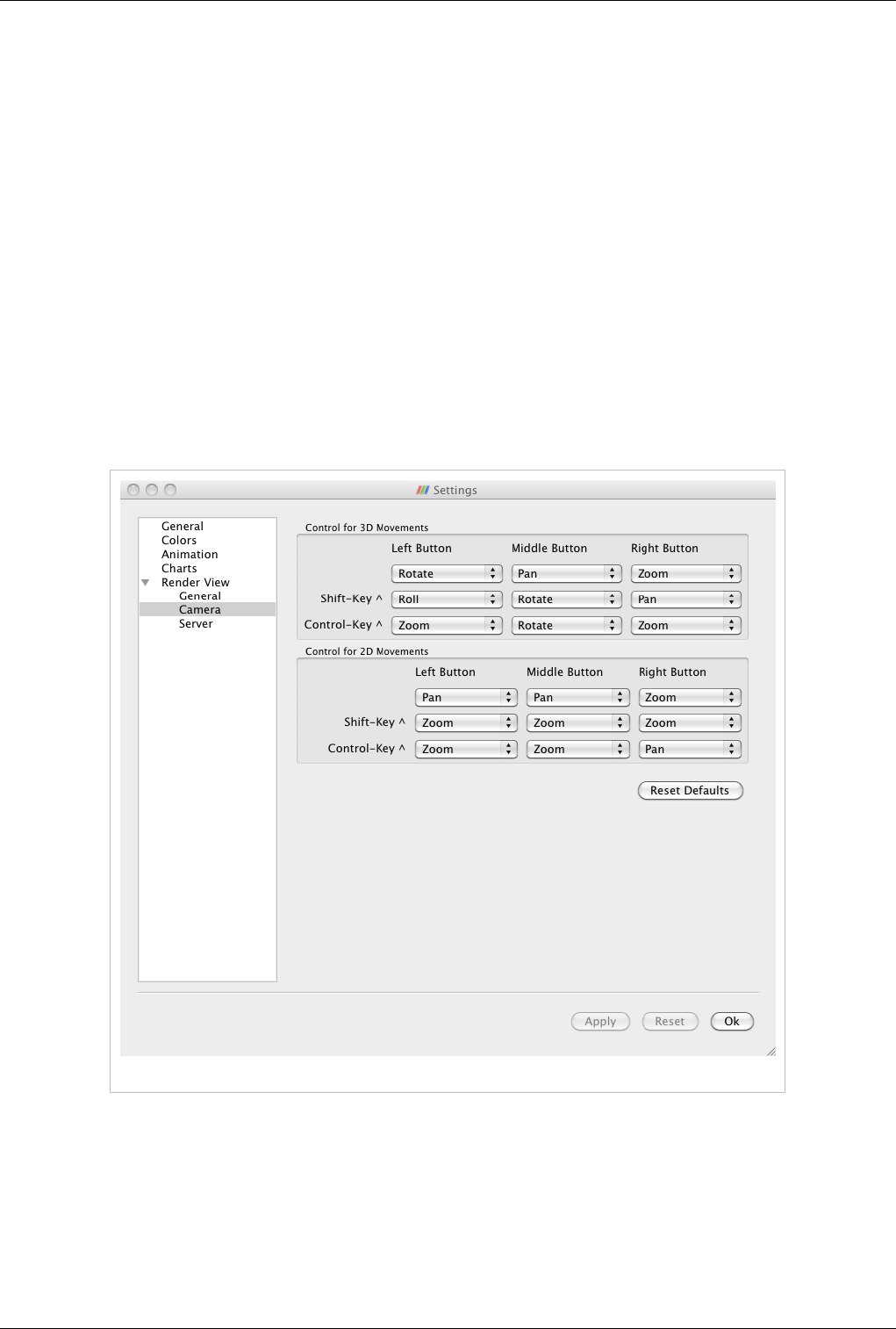

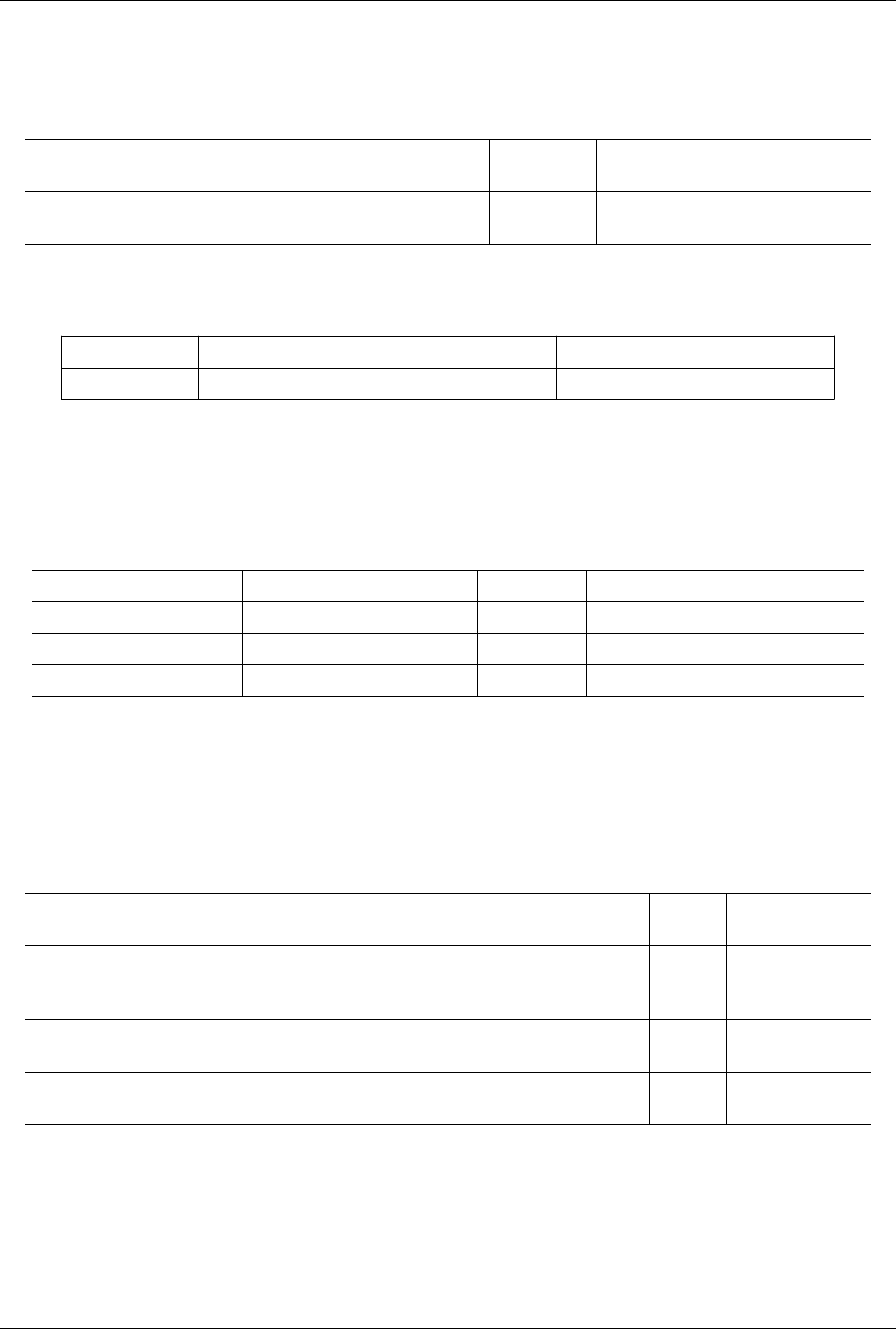

scene. The default buttons are shown in Table 4.1 and they can be changed using the Application Settings dialog.

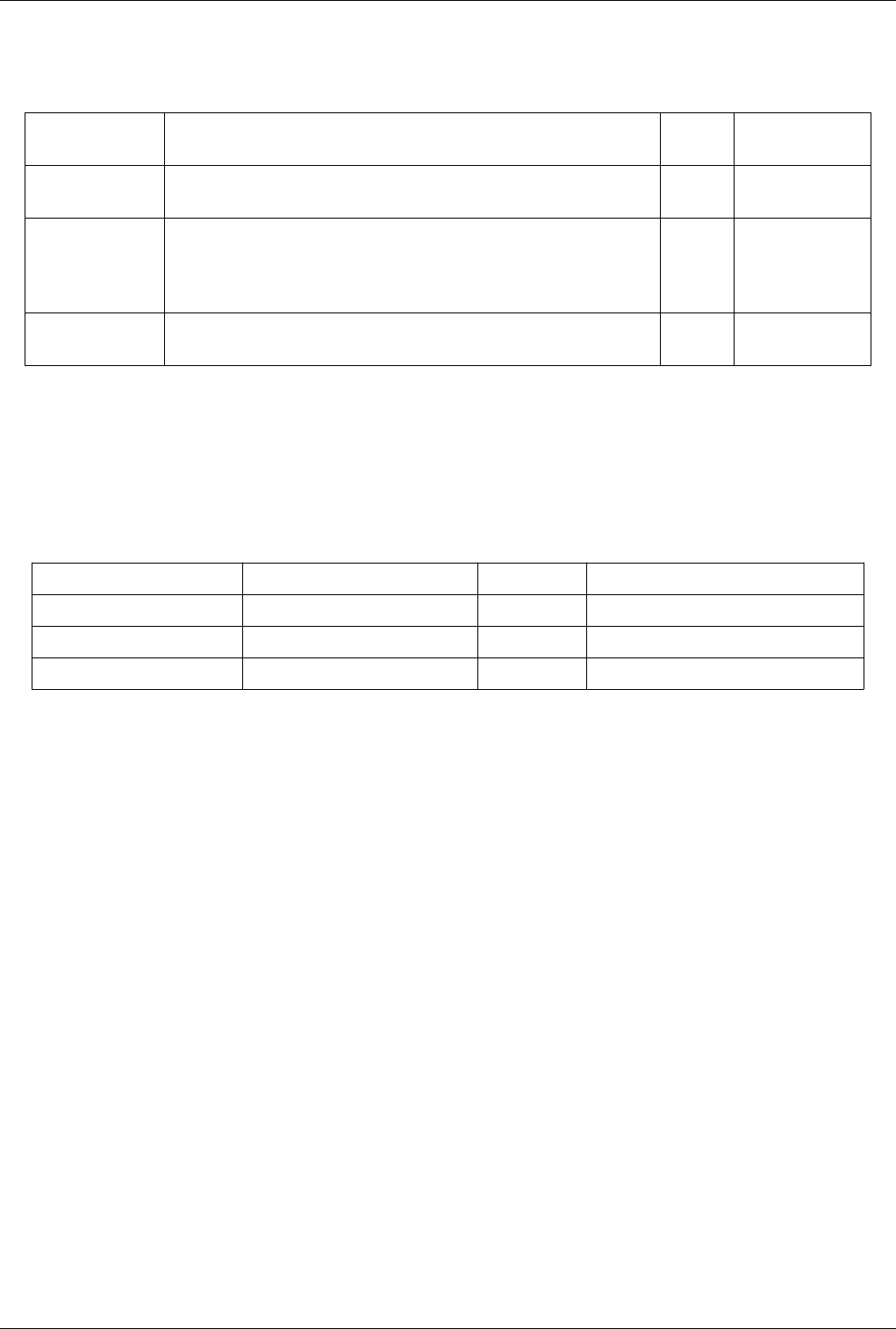

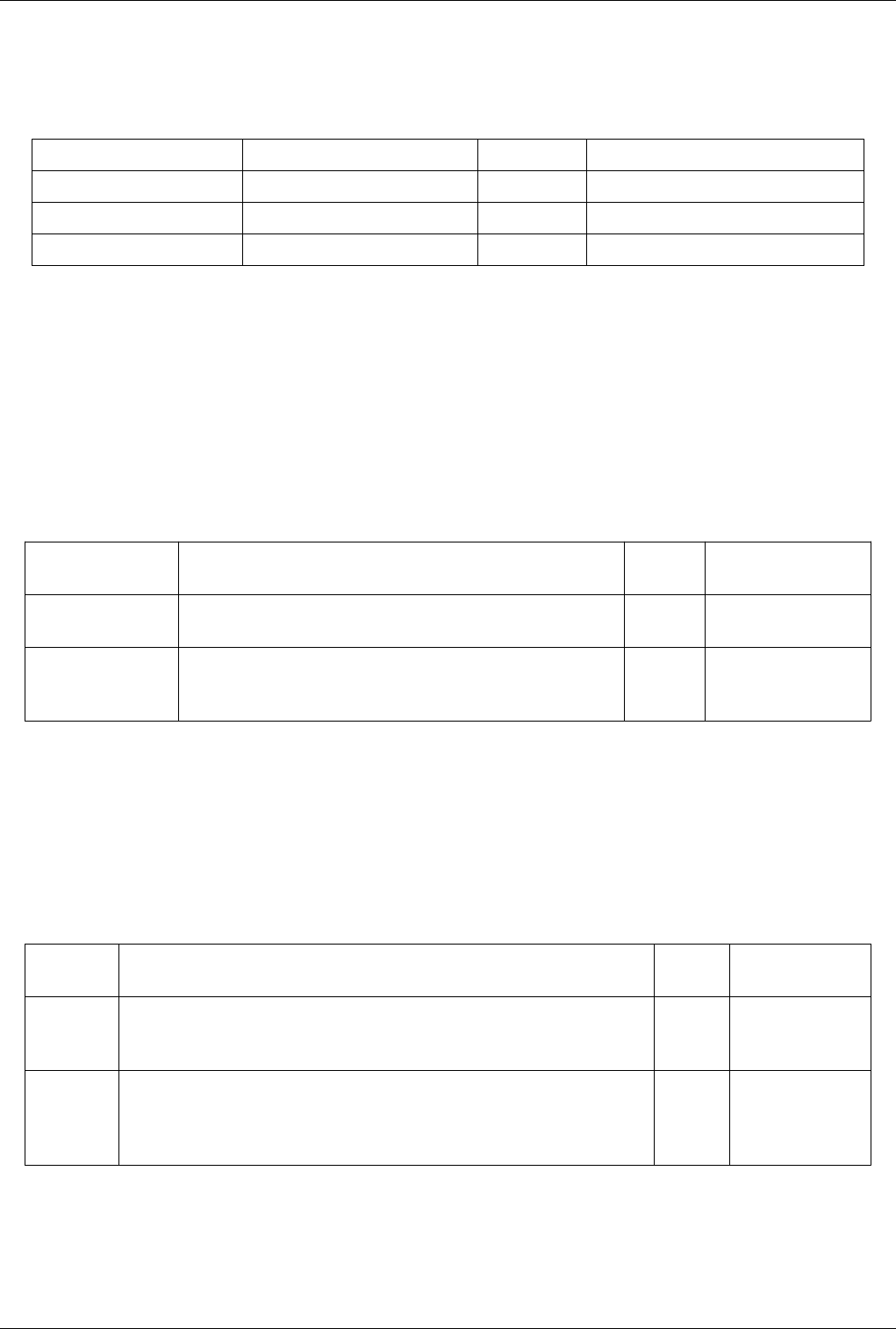

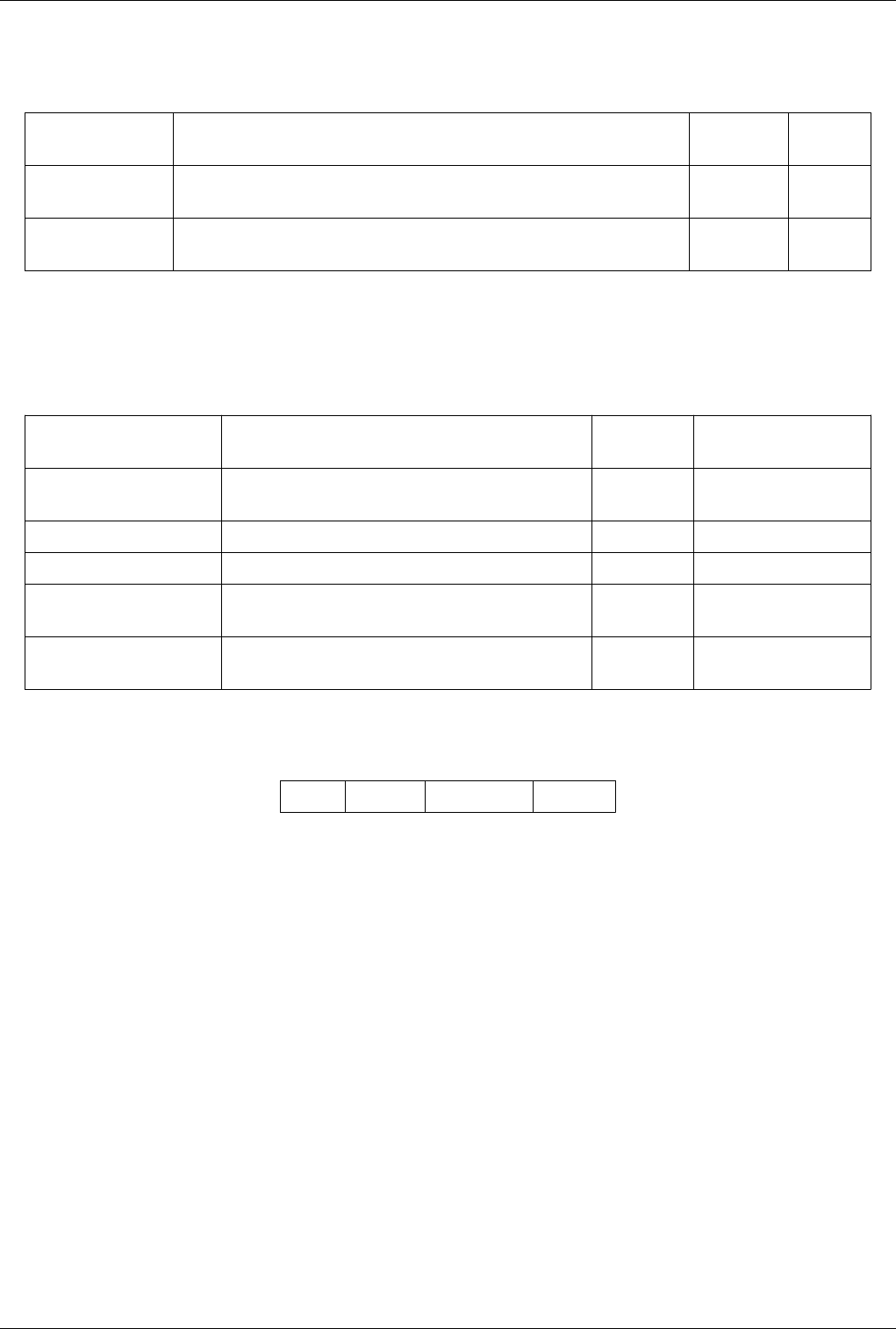

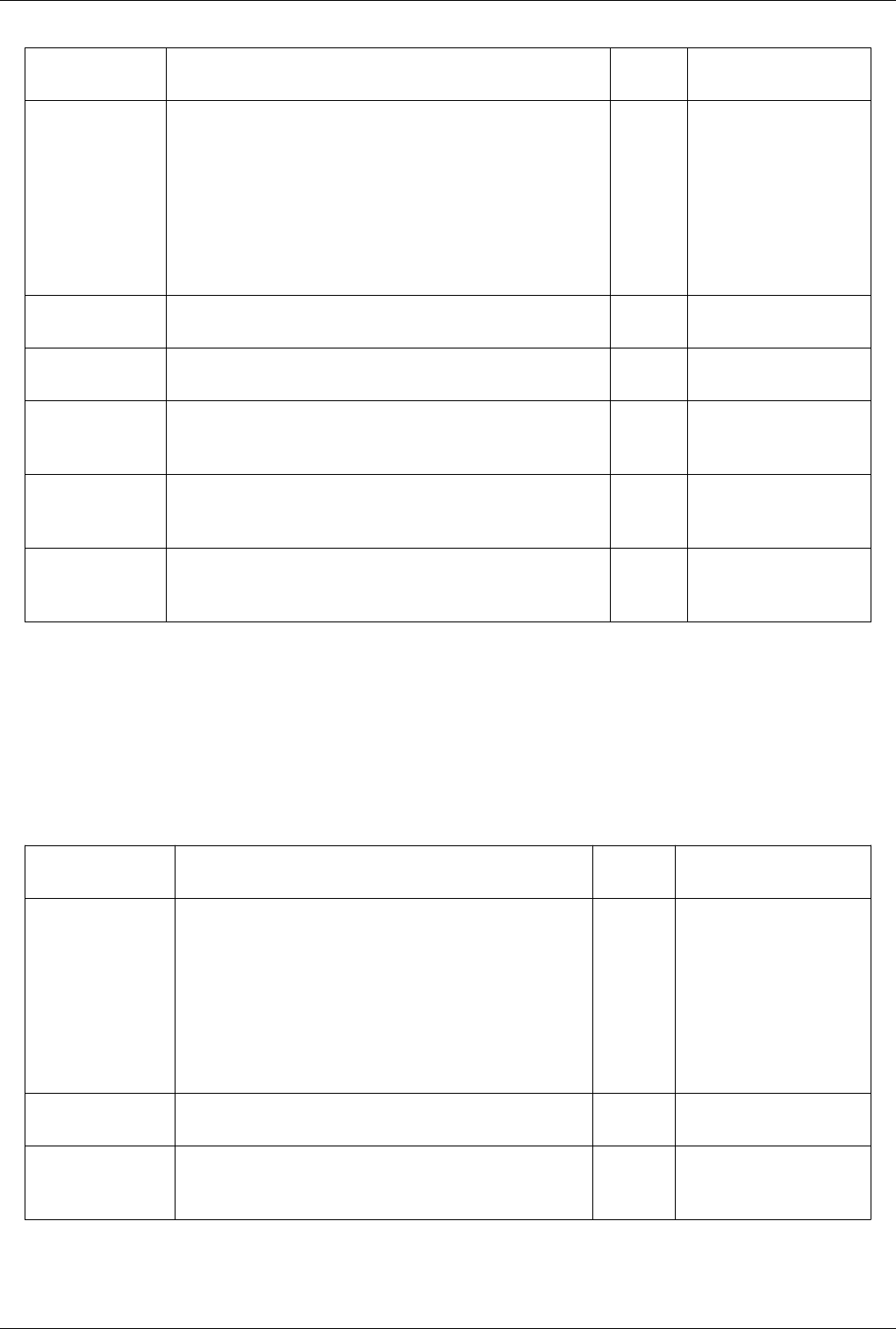

Table 4.1

Modifier Left Button Middle Button Right Button

Rotate Pan Zoom

Shift Roll Rotate Pan

Control Zoom Rotate Zoom

This view can dynamically switch to a 2D mode and follow the interaction shown in Table 4.2 and they can be

changed using the Application Settings dialog.

Table 4.2

Modifier Left Button Middle Button Right Button

Pan Pan Zoom

Shift Zoom Zoom Zoom

Control Zoom Zoom Pan

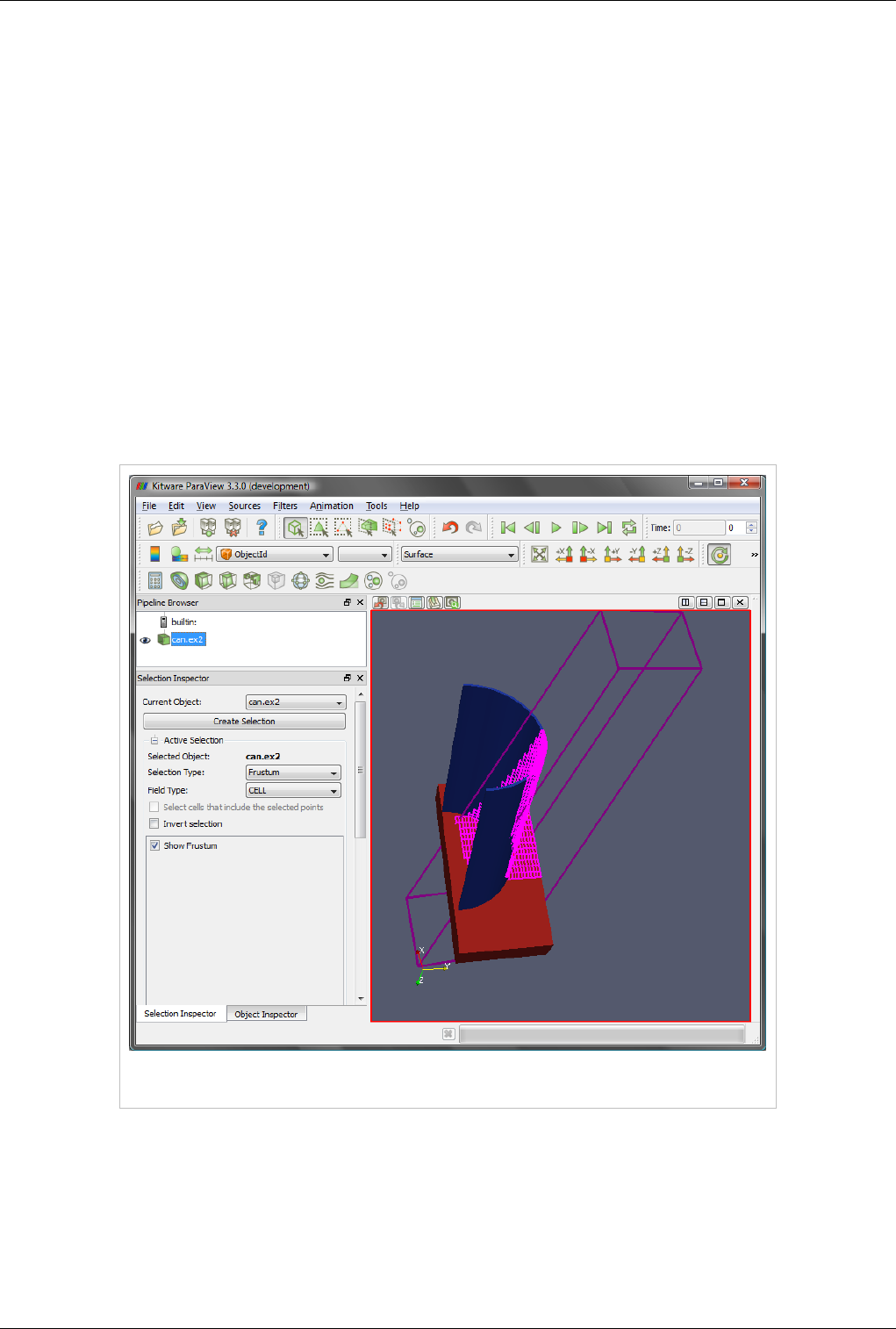

This view supports selection. You can select cells or points either on the surface or those within a frustum. Selecting

cells or points makes it possible to extract those for further inspection or to label them. Details about data querying

and selection can be found the Quantitative analysis chapter.

Views, Representations and Color Mapping 39

View Settings

The View Settings dialog is accessible through the Edit | View Settings menu or the tool button in the left corner of

the view can be used to change the view settings per view.

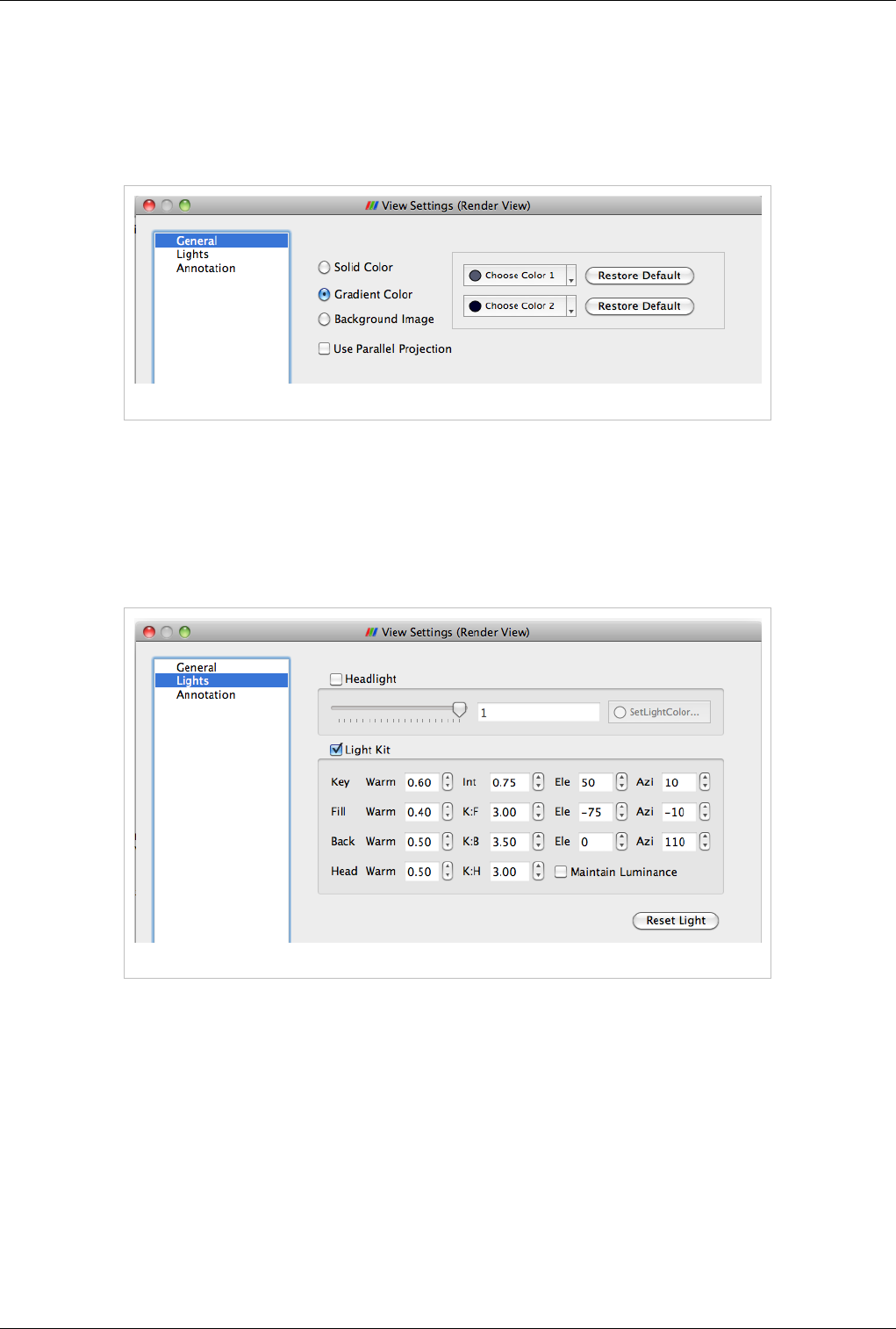

General

Figure 4.4 General tab in the View Settings menu

The General tab allows the user to choose the background color. You can use a solid color, gradient or a background

image.

By default the camera uses perspective projection. To switch to parallel projection, check the Use Parallel Projection

checkbox in this panel.

Lights

Figure 4.5 Lights tab in the View Settings menu

The 3D View requires lights to illumniate the geometry being rendered in the scene. You can control these lights

using this pane.

Views, Representations and Color Mapping 40

Annotation

Figure 4.6 Annotation tab in the View Settings menu

The annotation pane enables control of the visibility of the center axes and the orientation widget. Users can also

make the orientation widget interactive so that they can manually place the widget at location of their liking.

Display Properties

Users can control how the data from any source or filter is shown in this view using the Display tab. This section

covers the various options available to a user for controlling appearance of the rendering in the 3D view.

View

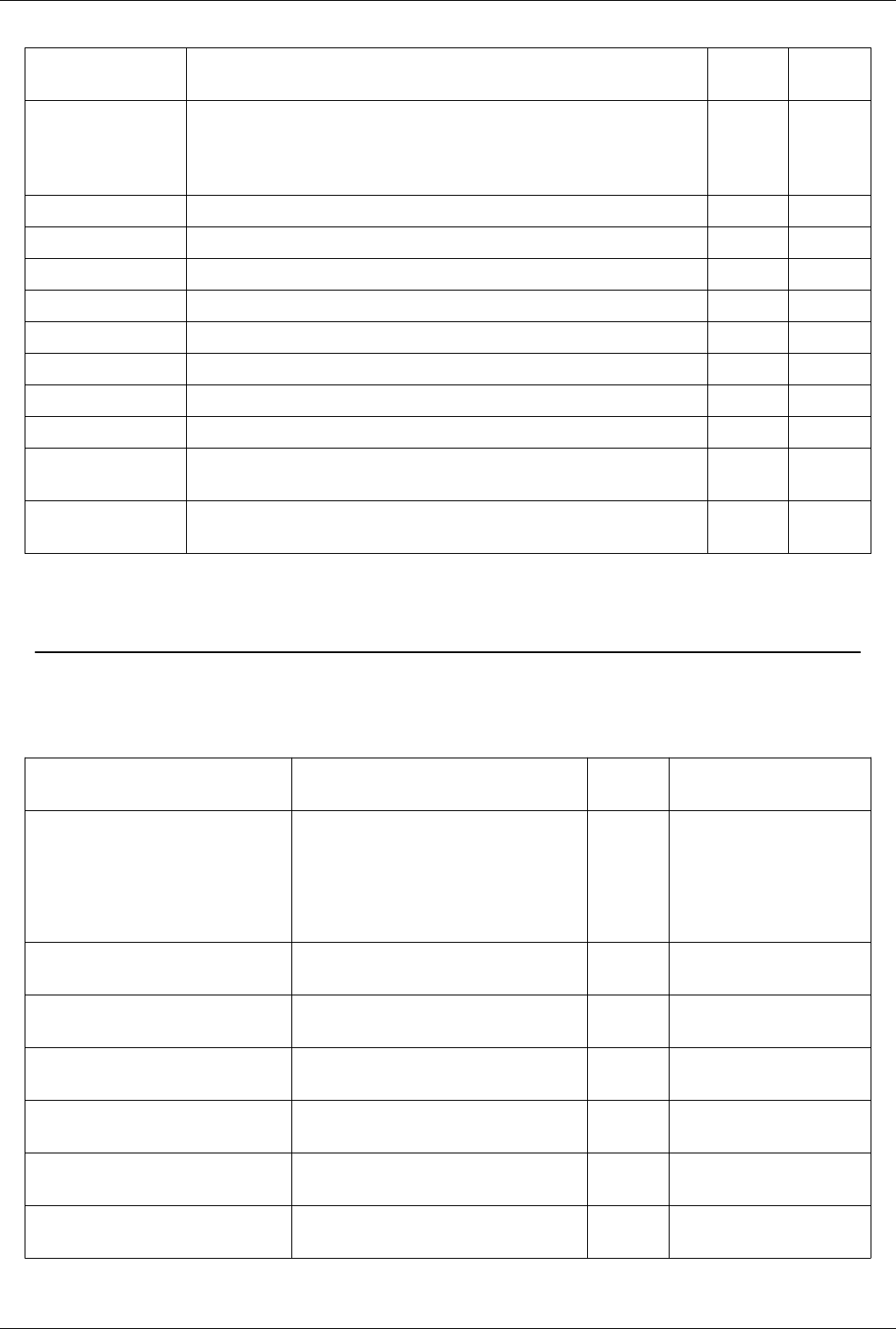

The View menu has three options for controlling how the data is viewed. These are described in Table 4.3.

Figure 4.6 View menu

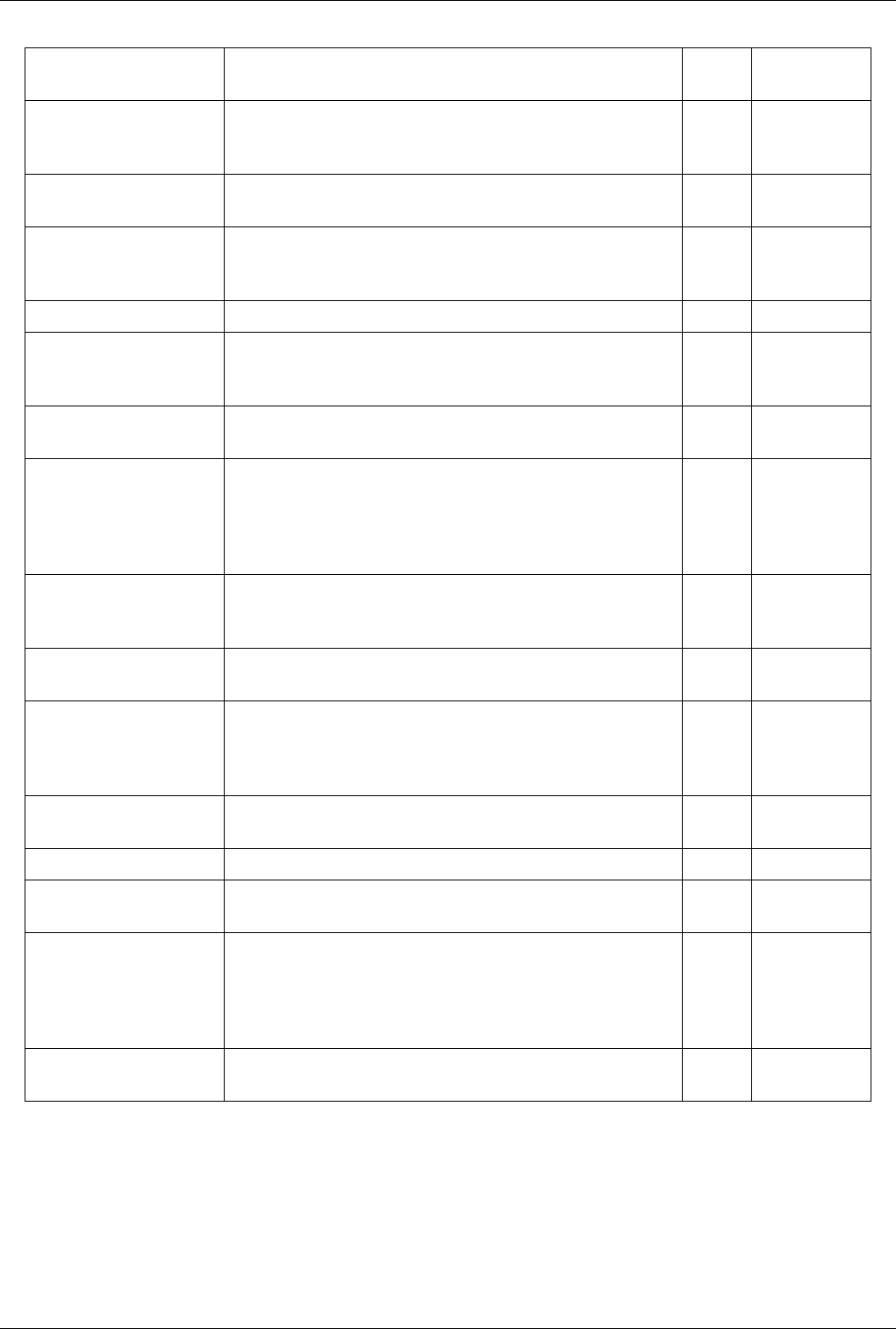

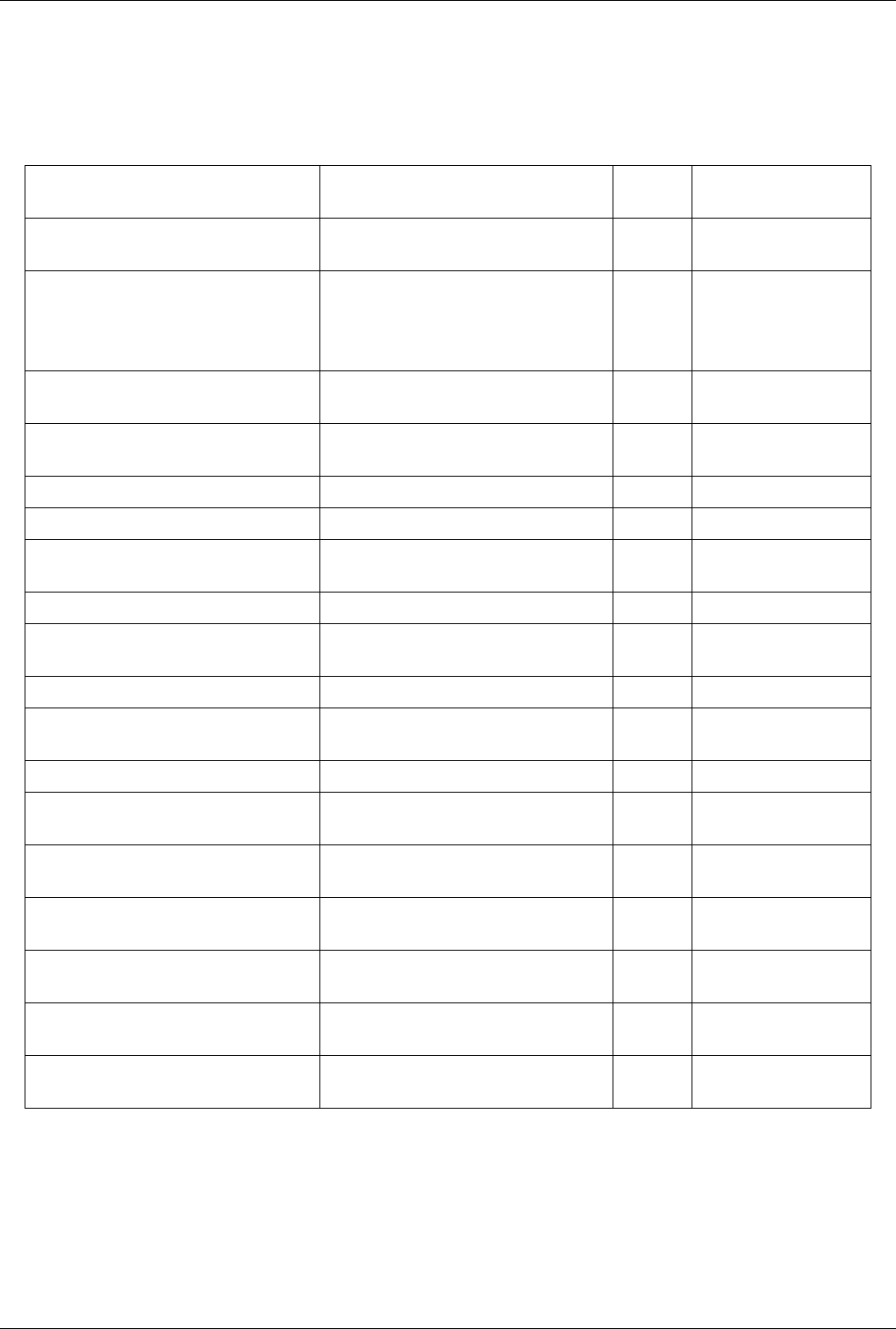

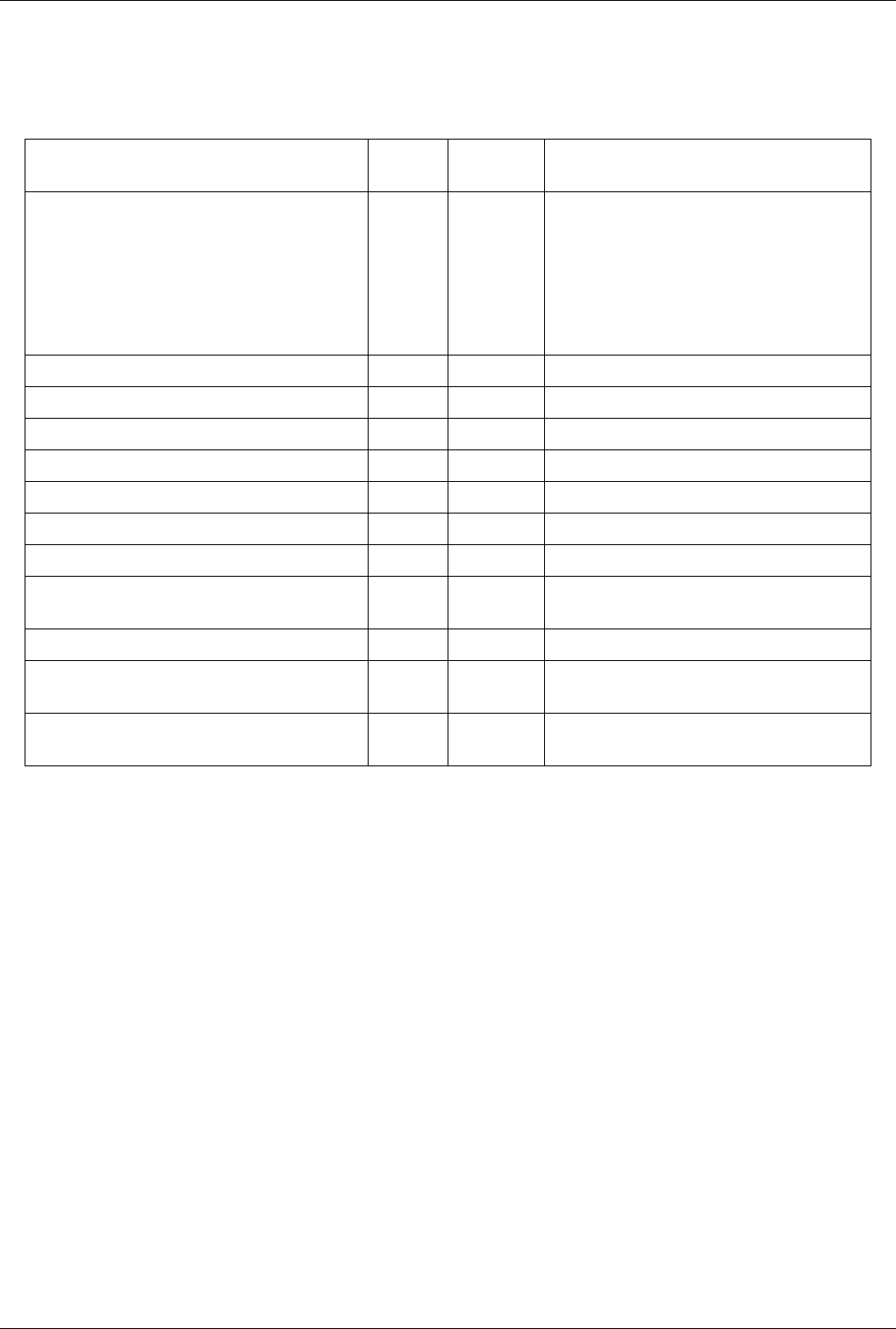

Table 4.3

Name Usage

Visible Checkbox used to toggle the visibility of the data in the view. If it disabled, it implies that the data cannot be shown in this view.

Selectable Checkbox used to toggle whether the data gets selected when using the selection mechanism for selecting and sub-setting data.

Zoom to Data Click this button to zoom the camera so that the dataset is completely fits within the viewport.

Views, Representations and Color Mapping 41

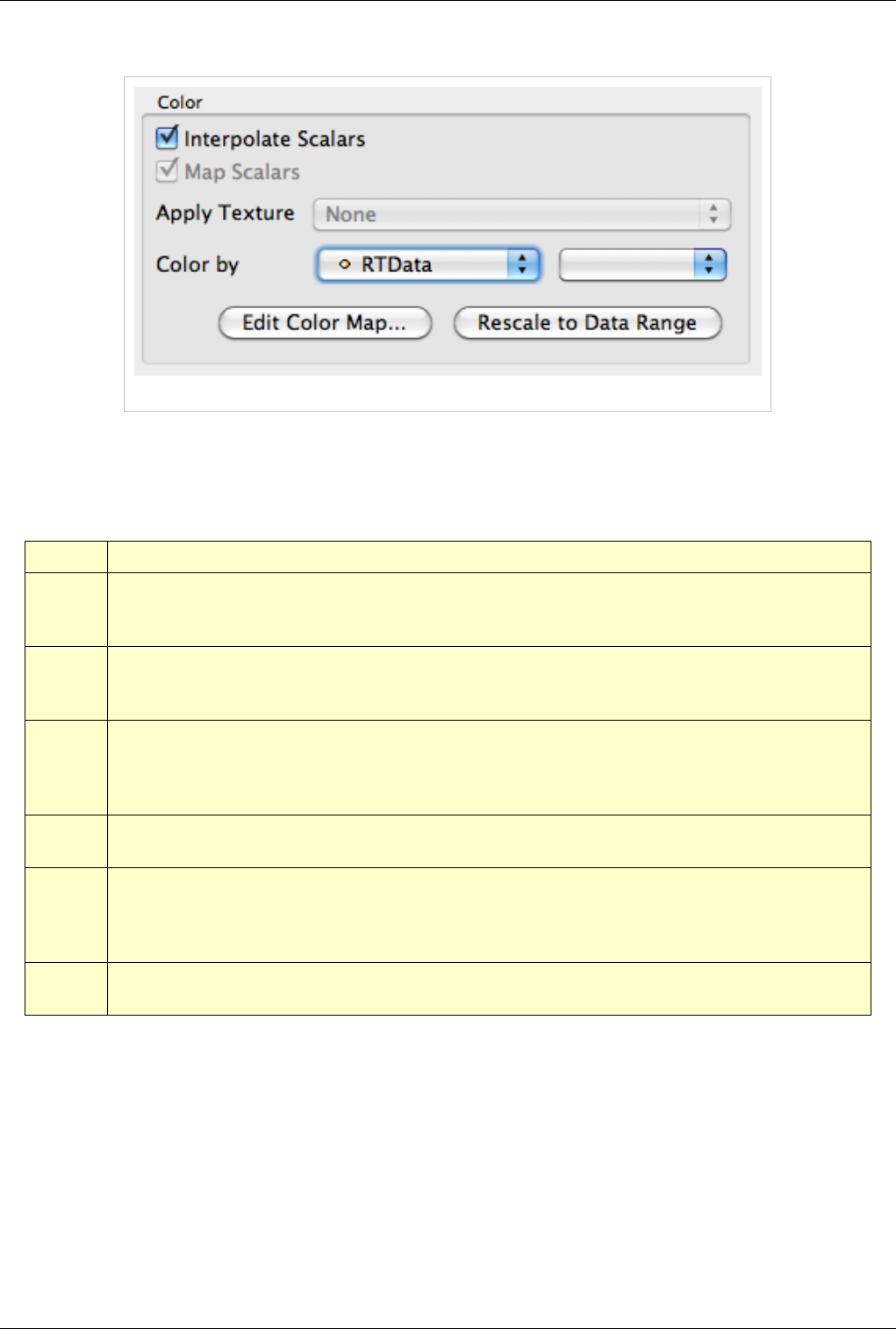

Color

Figure 4.8 Color options

The color group allows users to pick the scalar to color with or set a fixed solid color for the rendering. The options

in Figure 4.8 are described in detail in Table 4.4

Table 4.4

Name Usage

Interpolate

Scalars

If selected, the scalars will be interpolated within polygons and the scalar mapping happens on a per pixel basis. If not selected, then

color mapping happens at points and colors are interpolated which is typically less accurate. This only affects when coloring with

point arrays and has no effect otherwise. This is disabled when coloring using a solid color.

Map Scalars If the data array can be directly interpreted as colors, then you can uncheck this to not use any lookup table. Otherwise, when

selected, a lookup table will be used to map scalars to colors. This is disabled when the array is not of a type that can be interpreted as

colors (i.e. vtkUnsignedCharArray).

Apply

Texture

This feature makes it possible to apply a texture over the surface. This requires that the data has texture coordinates. You can use

filters like Texture Map to Sphere, Texture Map to Cylinder or Texture Map to Plane to generate texture coordinates when they are

not present in the data. To load a texture, select Load from the combo box which will pop up a dialog allowing you to choose an

image. Otherwise, select from already loaded textures listed in the combo box.

Color By This feature enables coloring of the surface/volume. Either choose the array to color with or set the solid color to use. When volume

rendering, solid coloring is not possible, you must choose the data array to volume render with.

Set solid

color

Used to set the solid color. This is available only when Color By is set to use Solid Color. ParaView defines a notion of a color

palette consisting of different color categories. To choose a color from one of these predefined categories, click the arrow next to this

button. It will open up a drop down with options to choose from. If you use a color from the palette, it is possible to globally change

the color by changing the color palette e.g. for printing or for display on screen etc.

Edit Color

Map...

You can edit the color map or lookup table by clicking the Edit Color Map button. It is only shown when an array is chosen in the

Color By combo-box.

Views, Representations and Color Mapping 42

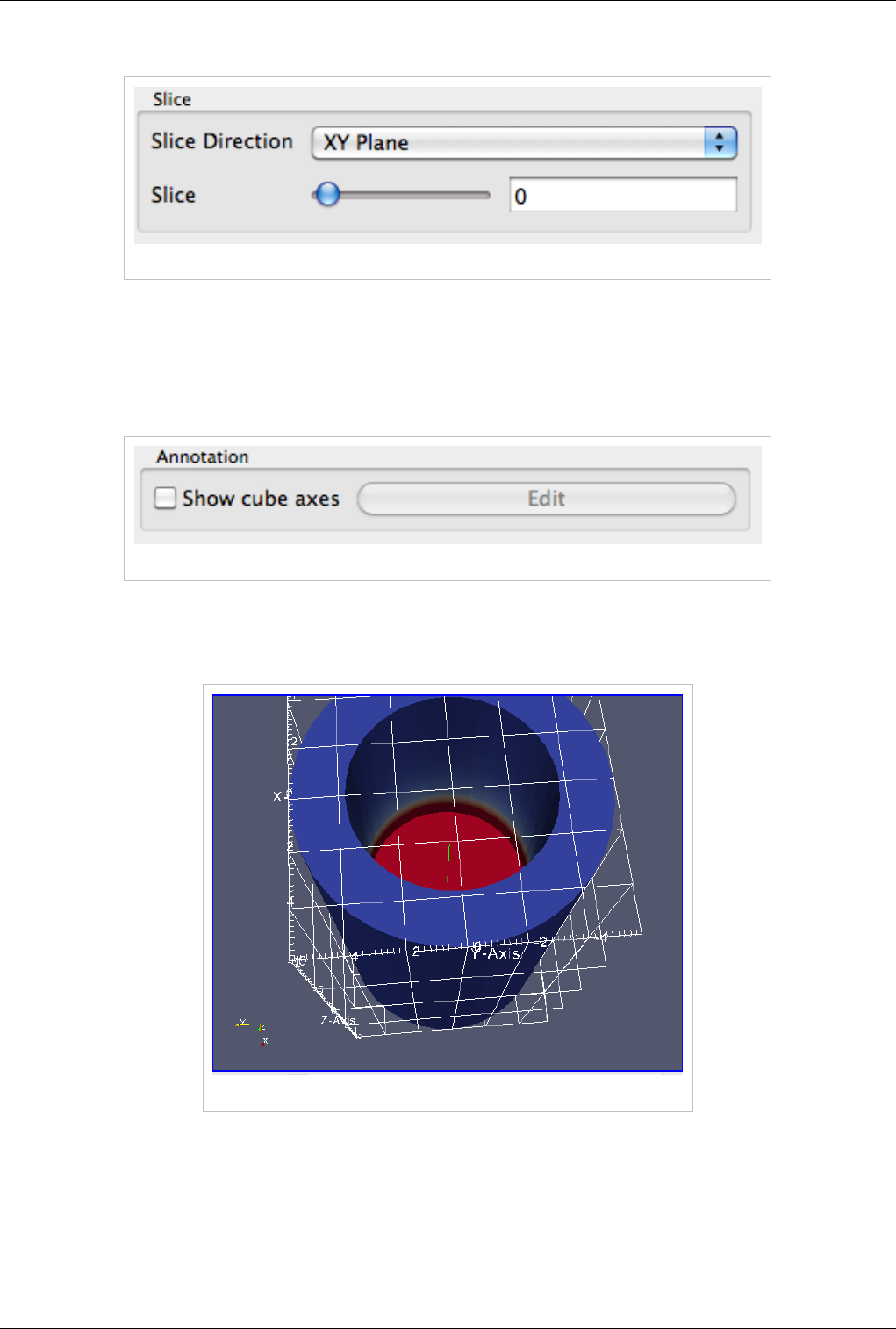

Slice

Figure 4.9 Slice options

The slice controls are available only for image datasets (uniform rectilinear grids) when the representation type is

Slice. The representation type is controlled using the Style group on the Display tab. These allow the user to pick the

slice direction as well as the slice offset.

Annotation

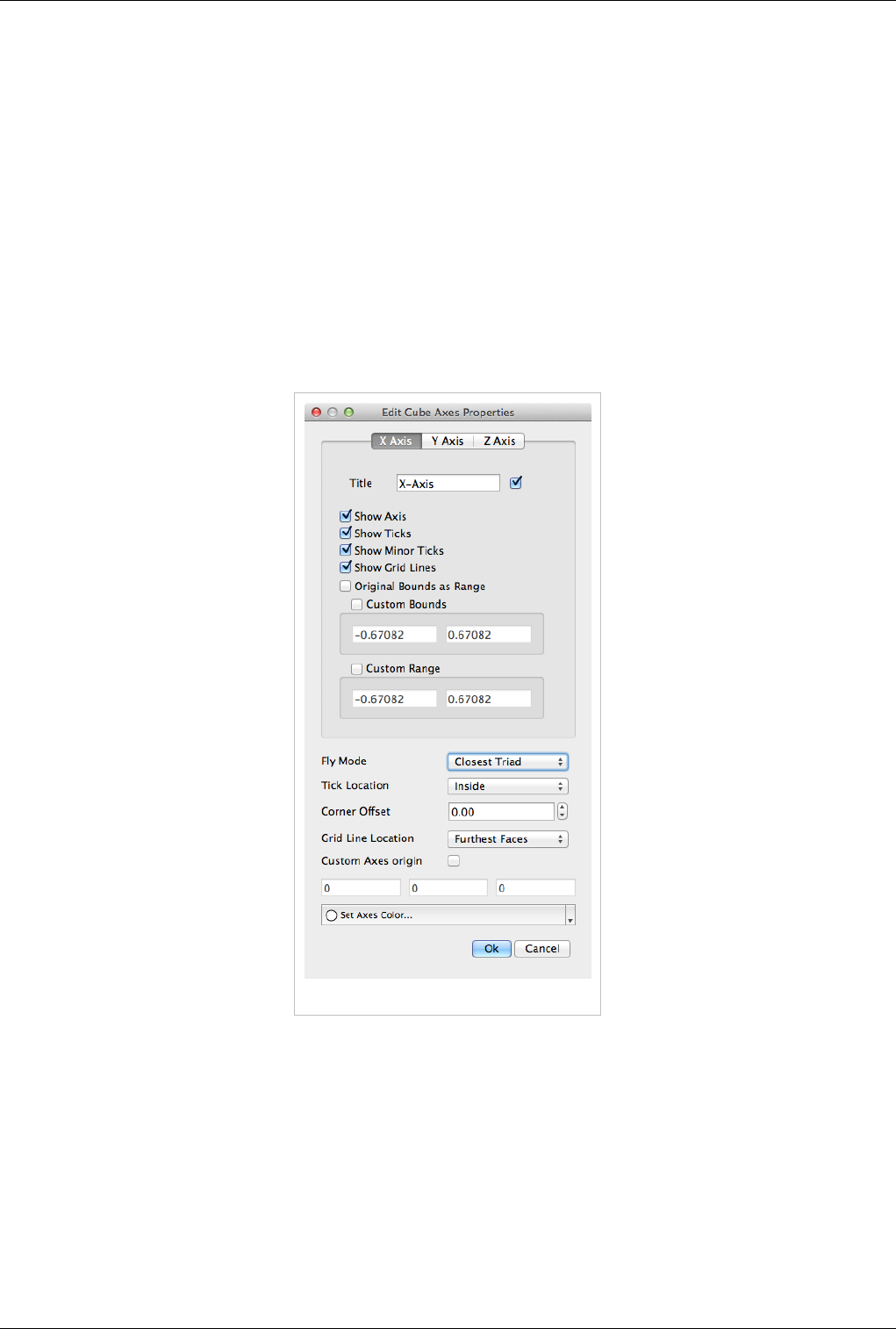

Figure 4.10 Annotation options

Cube axes is an annotation box that can be used to show a scale around the dataset. Use the Show cube axes

checkbox to toggle its visibility. You can further control the apperance of the cube axes by clicking Edit once the

cube-axes is visible.

Figure 4.11 Show cube axes example

Views, Representations and Color Mapping 43

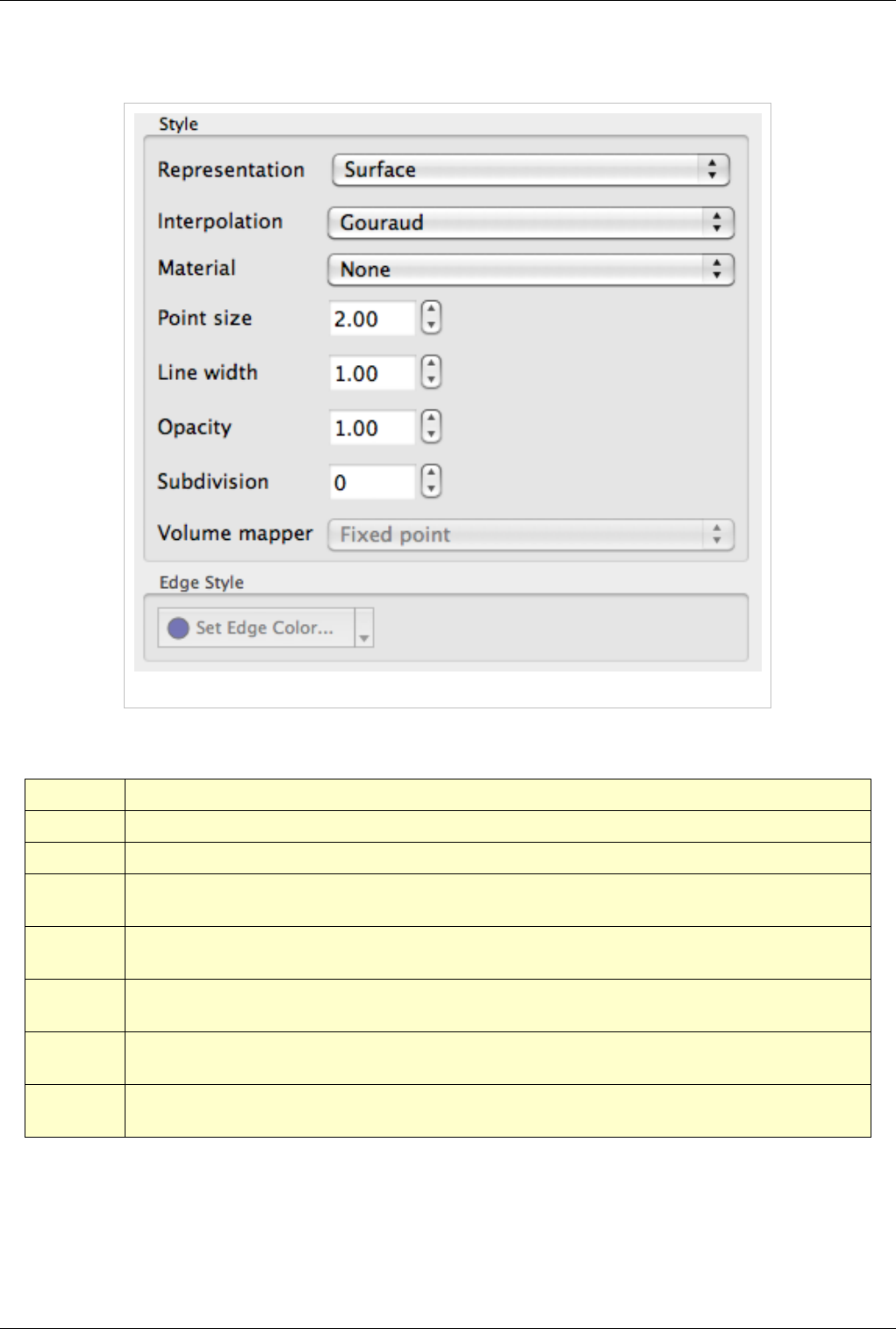

Style

Figure 4.12 shows the Style dialog box. The options in this dialog box are described in detail in Table 4.5 below.

Figure 4.12 Sytle dialog box

'Table 4.5

Name Usage

Representation Use this to change how the data is represented i.e. as a surface, volume, wireframe, points, or surface with edges.

Interpolation Choose the method used to shade the geometry and interpolate point attributes.

Point Size If your dataset contains points or vertices, this adjusts the diameter of the rendered points. It also affects the point size when

Representation is Points.

Line width If your dataset contains lines or edges, this scale adjusts the width of the rendered lines. It also affects the rendered line width

when Representation is Wireframe or Surface With Edges.

Opacity Set the opacity of the dataset's geometry. ParaView uses hardware-assisted depth peeling, whenever possible, to remove artifacts

due to incorrect sorting order of rendered primitives.

Volume

Mapper

When Representation is Volume, this combo box allows the user to choose a specific volume rendering technique. The techniques

available change based on the type of the dataset.

Set Edge Color This is available when Representation is Surface with Edges. It allows the user to pick the color to use for the edges rendered over

the surface.

Views, Representations and Color Mapping 44

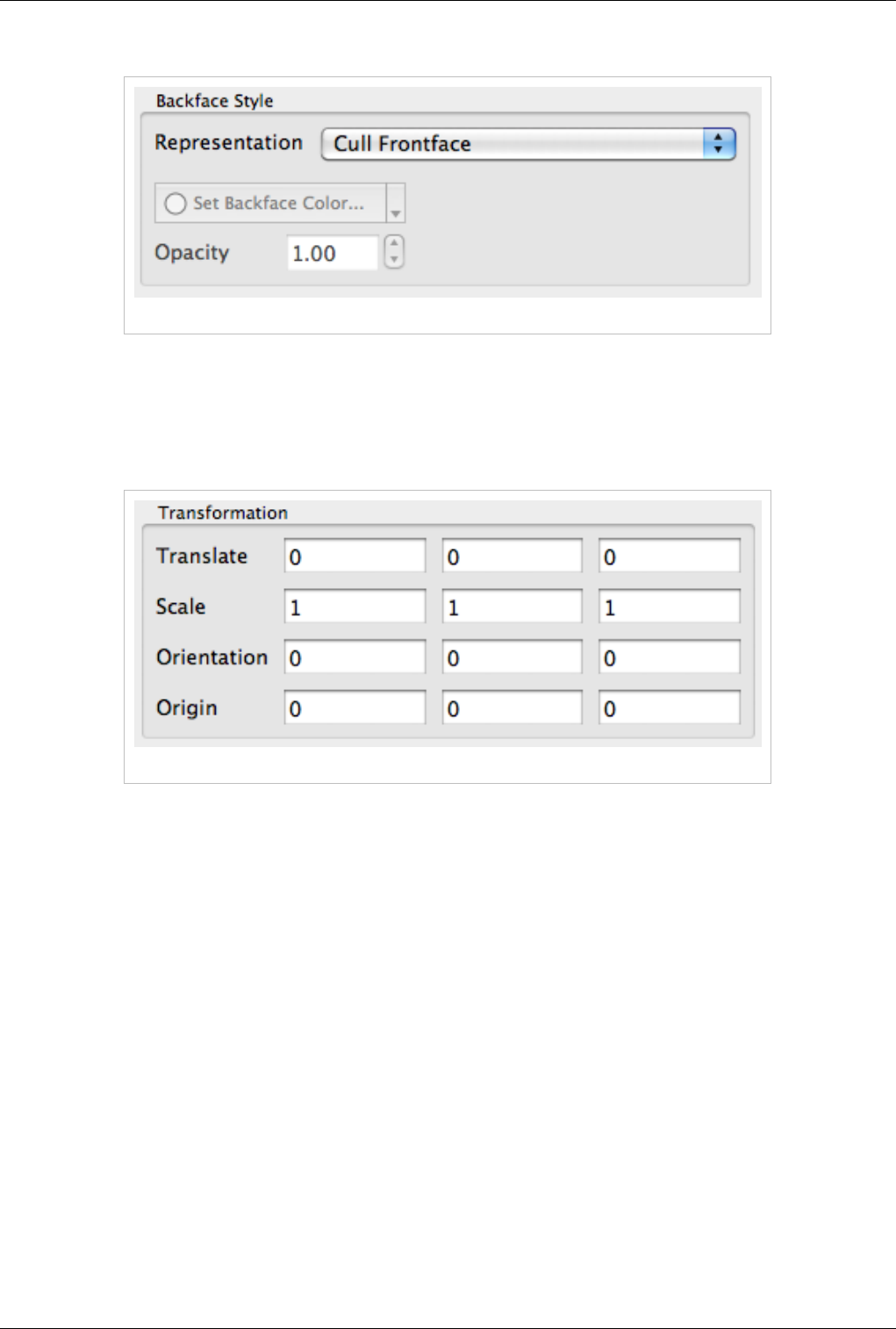

Backface Style

Figure 4.13 Backface Style dialog box

The Backface Style dialog box allows the user to define backface properties. In computer graphics, backface refers

to the face of a geometric primitive with the normal point away from the camera. Users can choose to hide the

backface or front face, or specify different characteristics for the two faces using these settings.

Transformation

Figure 4.14 Transformation dialog box

These settings allow the user to transform the rendered geometry, without actually transforming the data. Note that

since this transformation happens during rendering, any filters that you apply to this data source will still be working

on the original, untransformed data. Use the Transform filter if you want to transform the data instead.

2D View

This view does not exist anymore as it has been replaced by a more flexible 3D view that can switch from a 3D to

2D mode dynamically. For more information, please see the 3D view section.

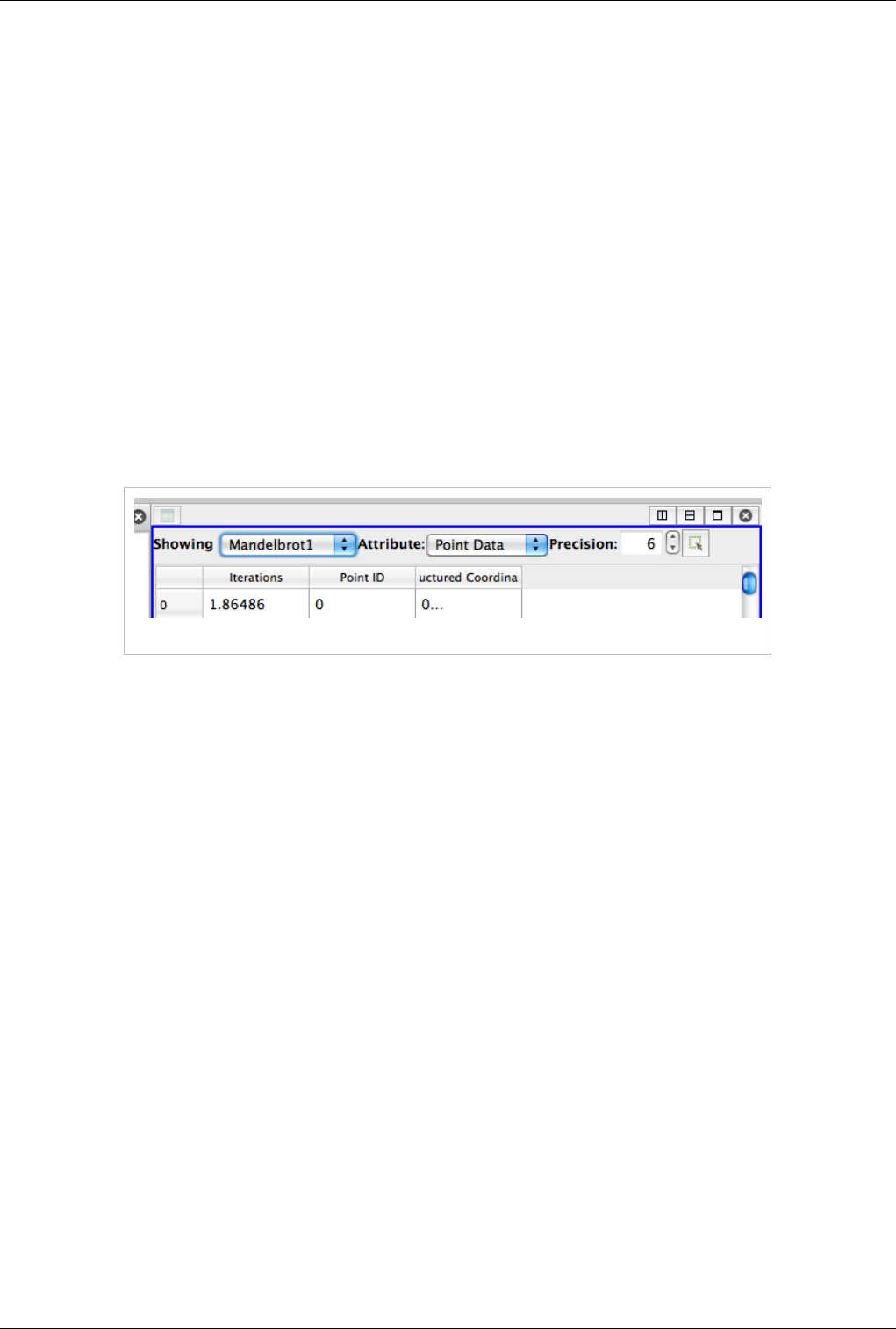

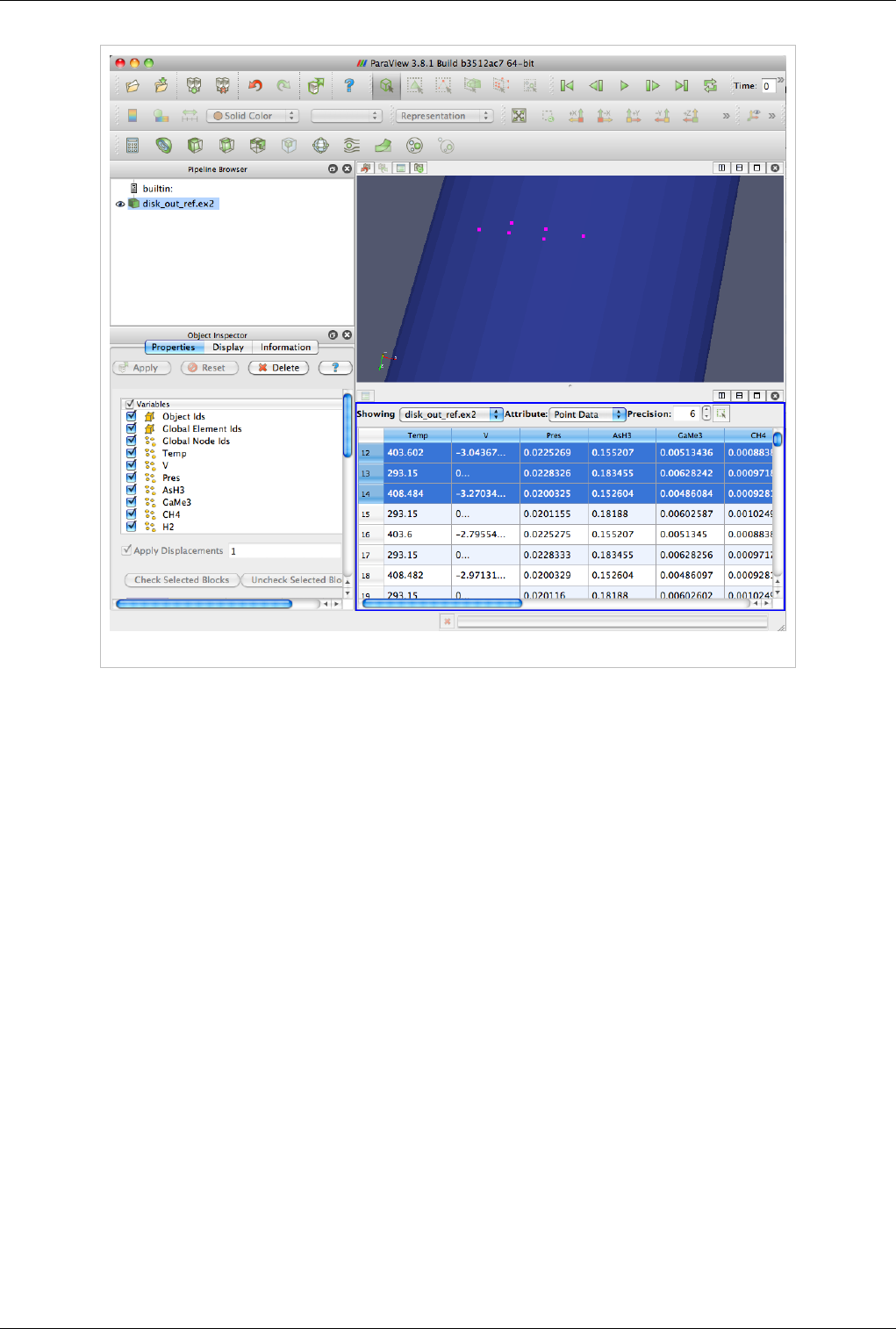

Spreadsheet View

Spreadsheet View is used to inspect the raw data in a spreadsheet. When running in client-server mode, to avoid

delivering the entire dataset to the client for displaying in the spreadsheet (since the data can be very large), this view

streams only visible chunks of the data to the client. As the user scrolls around the spreadsheet, new data chunks are

fetched.

Unlike some other views, this view can only show one dataset at a time. For composite datasets, it shows only one

block at a time. You can select the block to show using the Display tab.

Views, Representations and Color Mapping 45

Interaction

In regards to usability, this view behaves like typical spreadsheets shown in applications like Microsoft Excel or

Apple Pages:

• You can scroll up and down to inspect new rows.

• You can sort any column by clicking on the header for the column. Repeated clicking on the column header

toggles the sorting order. When running in parallel, ParaView uses sophisticated parallel sorting algorithms to

avoid memory and communication overheads to sort large, distributed datasets.

• You can double-click on a column header to toggle a mode in which only that column is visible. This reduces

clutter when you are interested in a single attribute array.

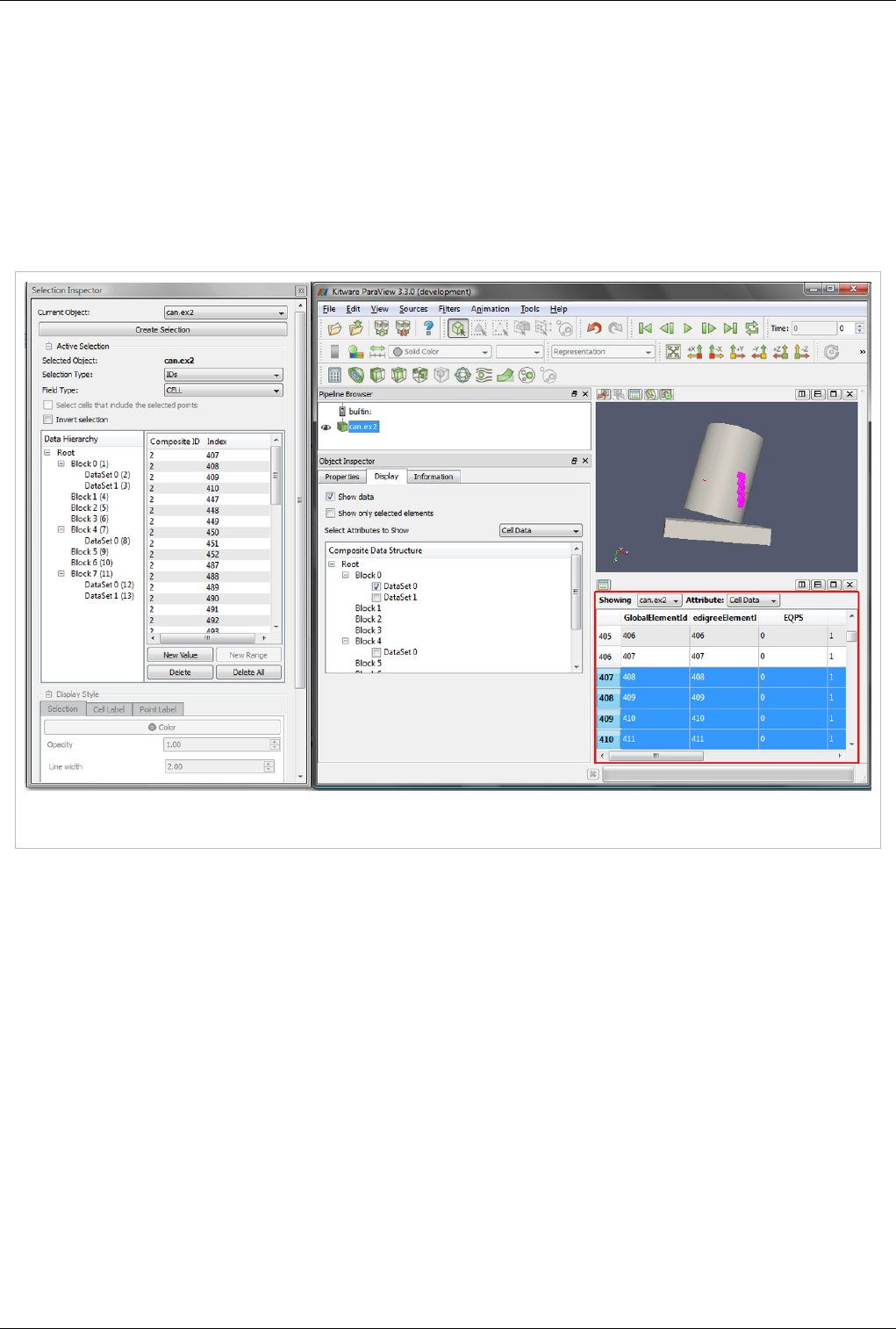

• You can click on rows to select the corresponding elements i.e. cells or points. This is not available when in

"Show selected only mode." Also, when you create a selection in other views e.g. the 3D view, the rows

corresponding to the selected elements will be highlighted.

Header

Unlike other views, Spreadsheet View has a header. This header provides quick access to some of the commonly

used functionality in this view.

Figure 4.17 Spreadsheet View Header

Since this view can only show one dataset at a time, you can quickly choose the dataset to show using the Showing

combo box. You can choose the attribute type i.e. point attributes, cell attributes, to display using the Attribute

combo box. The Precision option controls the number of digits to show after decimal point for floating point

numbers. Lastly, the last button allows the user to enter the view in a mode where it only shows the selected rows.

This is useful when you create a selection using another view such as the 3D view and want to inspect the details for

the selected cells or points.

Views, Representations and Color Mapping 46

View Settings

Currently, no user settable settings are available for this view.

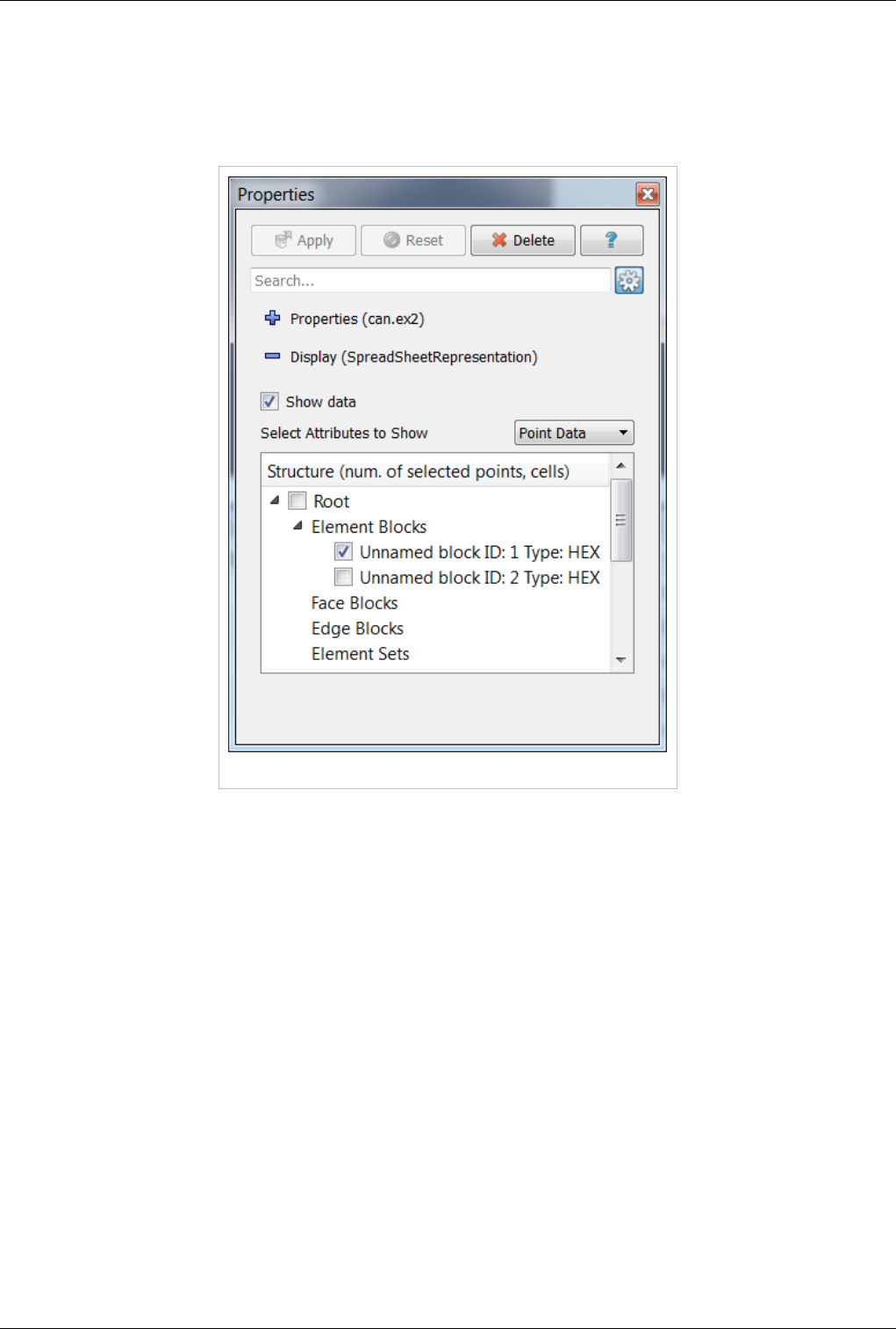

Display Properties

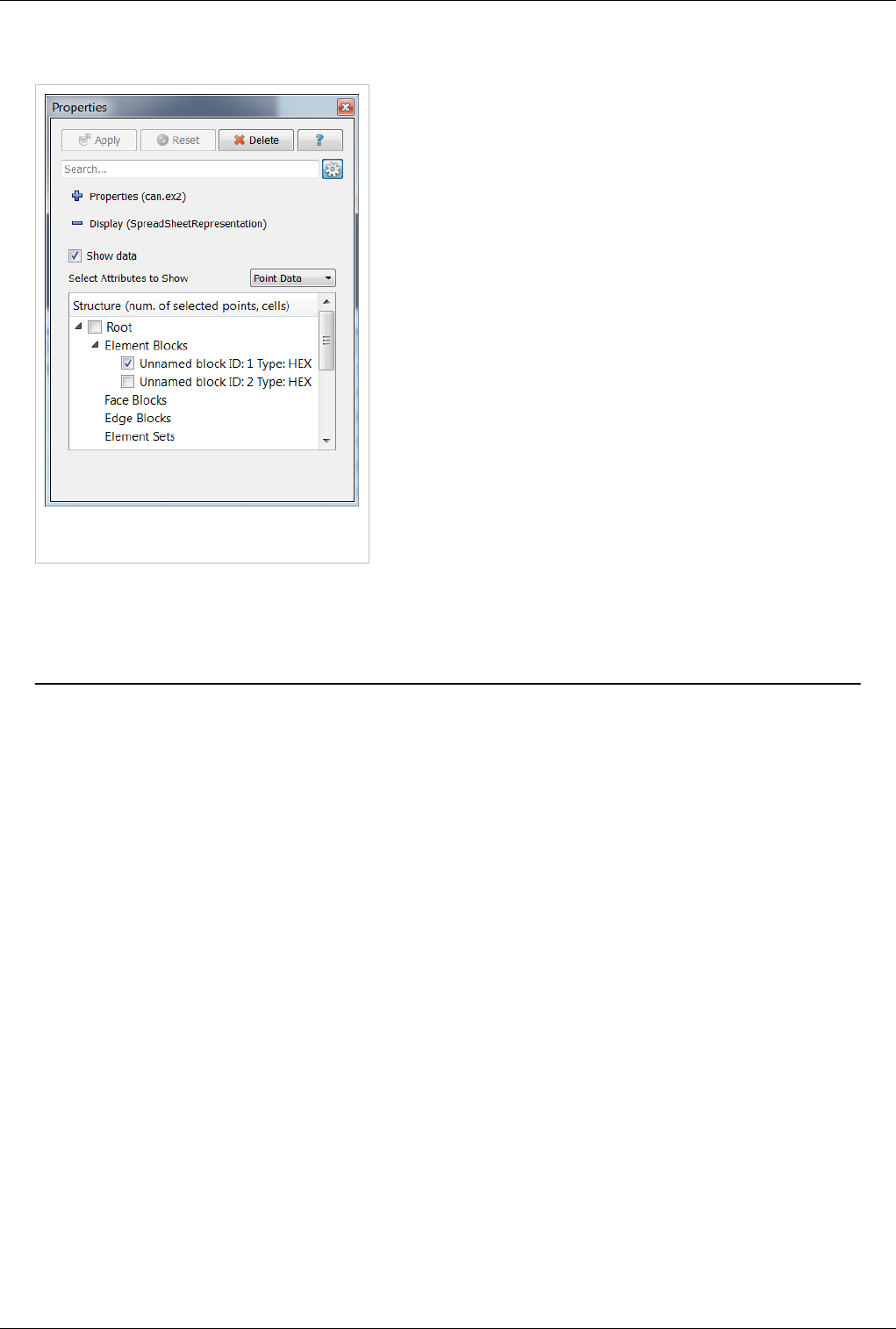

Figure 4.18 Display tab in the Object Inspector

The display properties for this view provide the same functionality as the header. Additionally, when dealing with

composite datasets, the display tab shows a widget allowing the user to choose the block to display in the view.

Line Chart View

A traditional 2D line plot is often the best option to show trends in small quantities of data. A line plot is also a good

choice to examine relationships between different data values that vary over the same domain.

Any reader, source, or filter that produces plottable data can be displayed in an XY plot view. ParaView stores its

plotable data in a table (vtkTable). Using the display properties, users can choose which columns in the table must be

plotted on the X and Y axes.

As with the other view types, what is displayed in the active XY plot view is displayed by and controllable with the

eye icons in the Pipeline Browser panel. When an XY plot view is active, only those filters that produce plotable

output have eye icons.

The XY plot view is the preferred view type for the Plot over Line, Plot Point over Time, Plot Cell over Time, Plot

Field Variable over Time, and Probe Location over Time filters. Creating any one of these filters will automatically

create an XY plot view for displaying its output. Figure 4.19 shows a plot of the data values within a volume as they

vary along three separate paths. The top curve comes from the line running across the center of the volume, where

the largest values lie. The other two curves come from lines running near the edges of the volume.

Views, Representations and Color Mapping 47

Unlike the 3D and 2D render view, the charting views are client-side views i.e. they deliver the data to be plotted to

the client. Hence ParaView only allows results from some standard filters such as Plot over Line in the line chart

view by default. However it is also possible to plot cell or point data arrays for any dataset by apply the Plot Data

filter.

Figure 4.19 Plot of data values within a volume

Interaction

The line chart view supports the following interaction modes:

•Right-click and drag to pan

•Left-click and drag to select

•Middle-click and drag to zoom to region drawn.

•Hover over any line in the plot to see the details for the data at that location.

To reset the view, use the Reset Camera button in the Camera Toolbar.

Views, Representations and Color Mapping 48

View Settings

The View Settings for Line Chart enable the user to control the appearance of the chart including titles, axes

positions etc. There are several pages available in this dialog. The General page controls the overall appearance of

the chart, while the other pages controls the appearance of each of the axes.

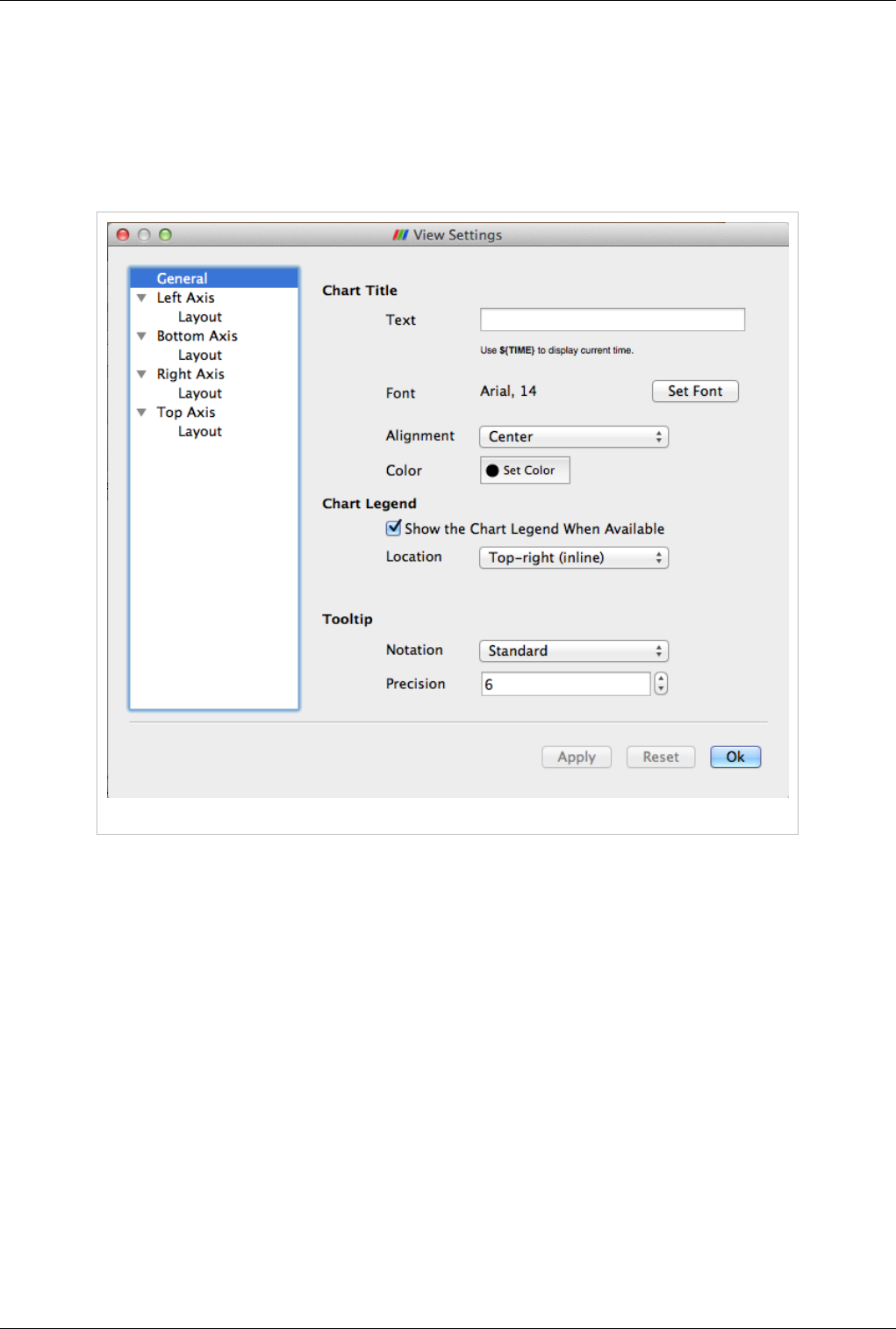

General Settings Page

Figure 4.20 General Settings panel

This page allows users to edit settings not related to any of the axes.

Chart Title

Specify the text and characteristics (such as color, font) for the title for the entire chart. To show the current

animation time in the title text, simply use the keyword ${TIME}.

Chart Legend

When data is plotted in the view, ParaView shows a legend. Users can change the location for the legend.

Tooltip

Specify the data formatting for the hover tooltips. The default Standard switches between scientific and fixed point

notations based on the data values.

Views, Representations and Color Mapping 49

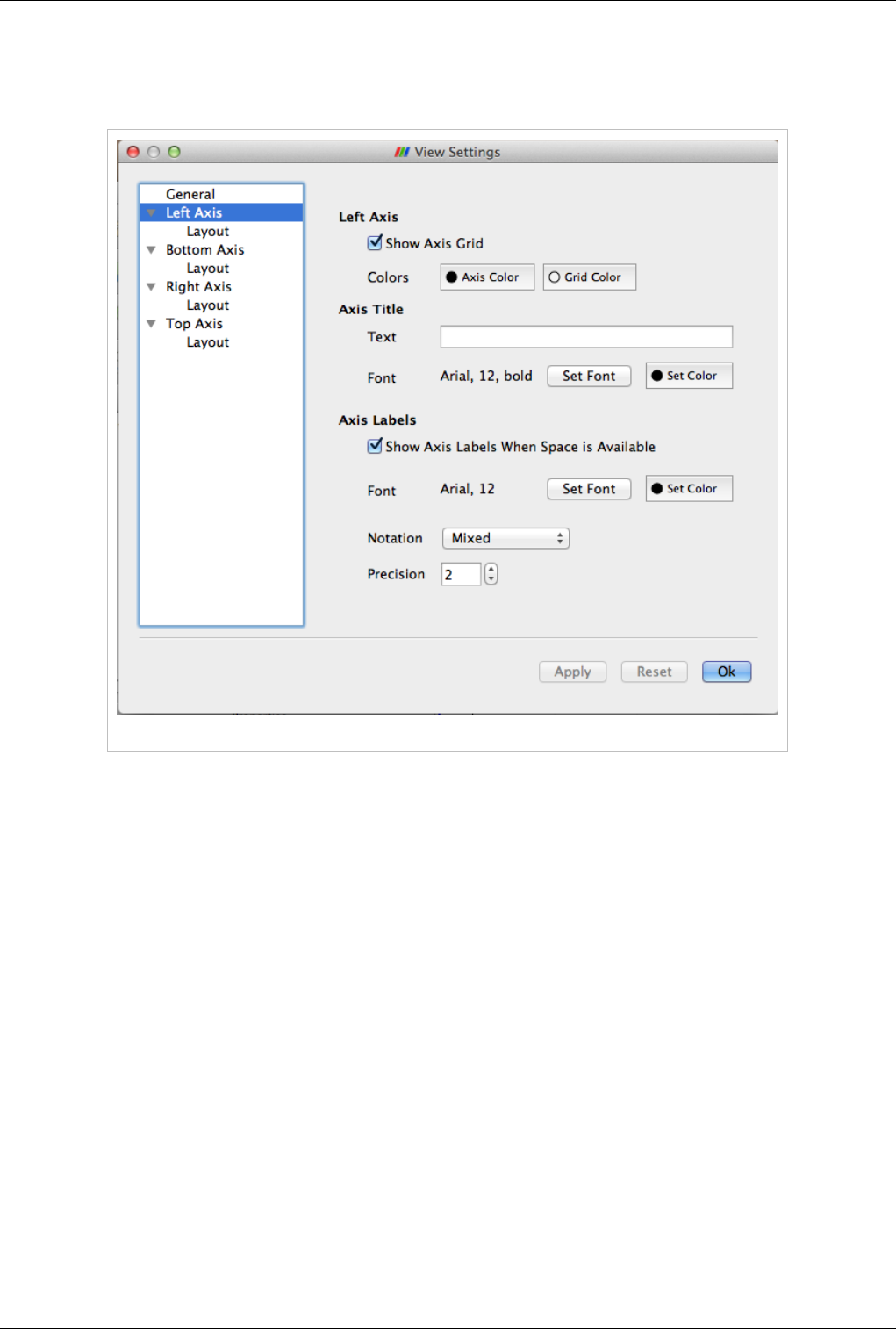

Axis Settings Page

On this page you can change the properties of a particular axis. Four pages are provided for each of the axes. By

clicking on the name of the axis, you can access the settings page for the corresponding axes.

Figure 4.21 Axis Settings panel

Left/Bottom/Right/Top Axis

• Show Axis Grid: controls whether a grid is to be drawn perpendicular to this axis

• Colors: controls the axis and the grid color

Axis Title

Users can choose a title text and its appearance for the selected axis.

Axis Labels

Axis labels refers to the labels drawn at tick marks along the axis. Users can control whether the labels are rendered

and their appearance including color, font and formatting. User can control the locations at which the labels are

rendered on the Layout page for the axis.

• Show Axis Labels When Space is Available : controls label visibility along this axis

• Font and Color: controls the label font and color

• Notation: allows user to choose between Mixed, Scientific and Fixed point notations for numbers

• Precision: controls precision after '.' in Scientific and Fixed notations

Views, Representations and Color Mapping 50

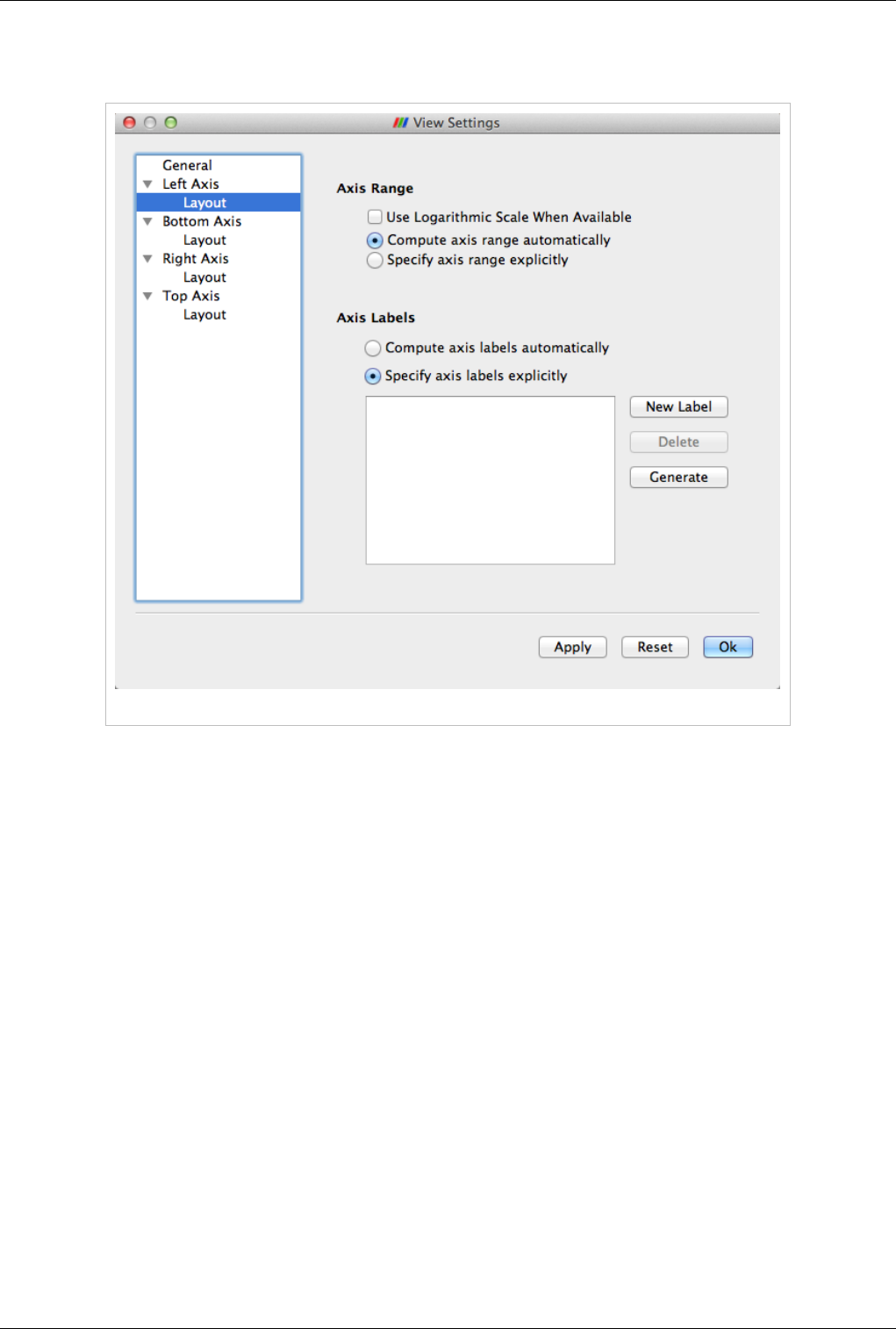

Axis Layout Page

This page allows the user to change the axis range as well as label locations for the axis.

Figure 4.22 Axis Layout panel

Axis Range

Controls how the data is plotted along this axis.

• Use Logarithmic Scale When Available: Check this to use a log scale unless the data contains numbers <= 0.

• Compute axis range automatically: Select this button to let the chart use the optimal range and spacing for this

axis. The chart will adjust the range automatically every time the data displayed in the view changes.

• Specify axis range explicitly: Select this button to specify the axis range explicitly. When selected, user can enter

the minimum and maximum value for the axis. The range will not change even when the data displayed in the

view changes. However, if the user manually interacts with the view (i.e. pans, or zooms), then the range

specified is updated based on the user's interactions.

Views, Representations and Color Mapping 51

Axis Labels

Controls how the labels are rendered along this axis. Users can control the labeling independently of the axis range.

• Compute axis labels automatically: Select this button to let the chart pick label locations optimally based on the

viewport size and axis range.

• Specify axis labels explicitly: Select this button to explicitly specify the data values at which labels should be

drawn.

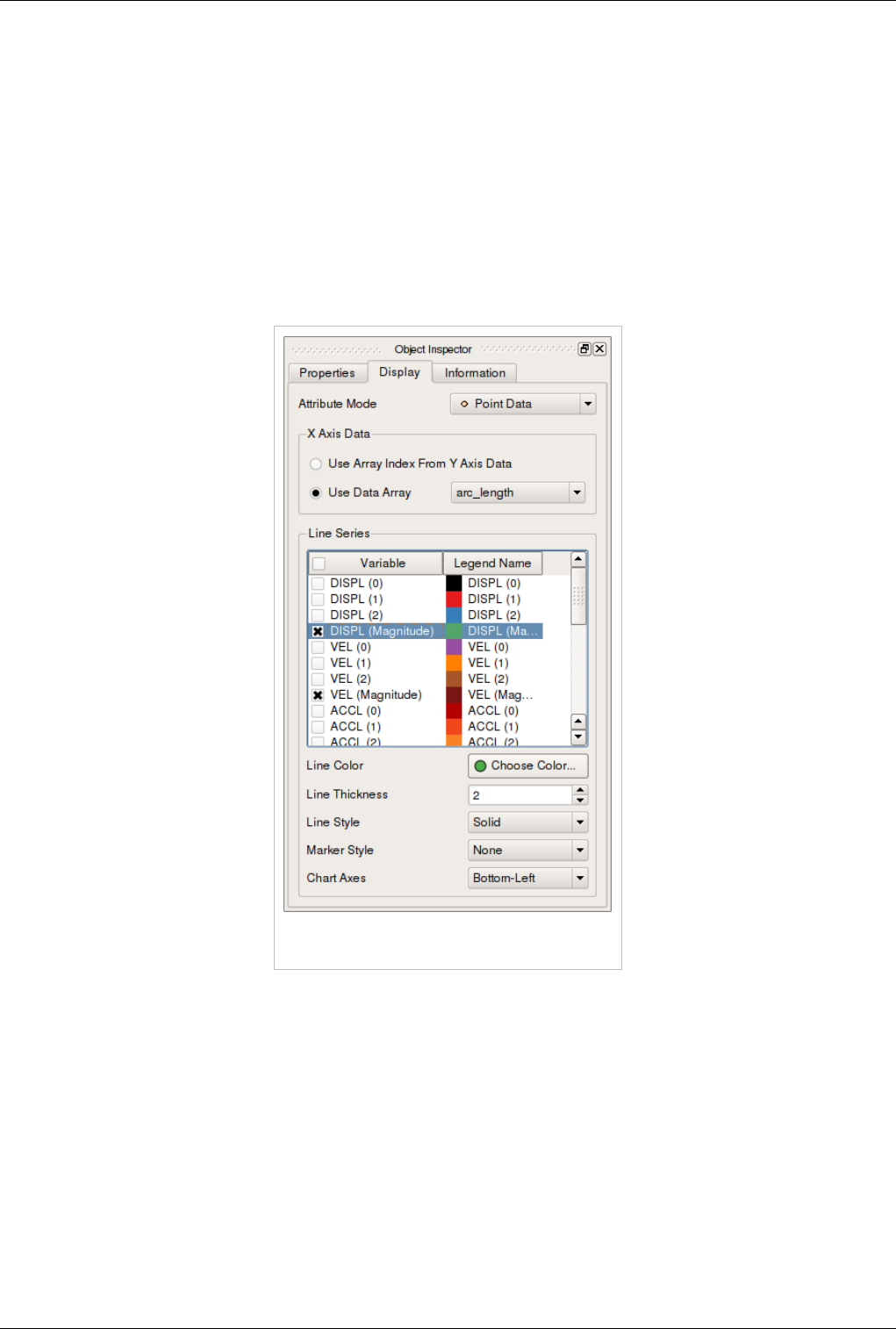

Display Properties

Display Properties for the Line Chart view allow the user to choose what arrays are plotted along which of the axes

and the appearance for each of the lines such as its color, thickness and style.

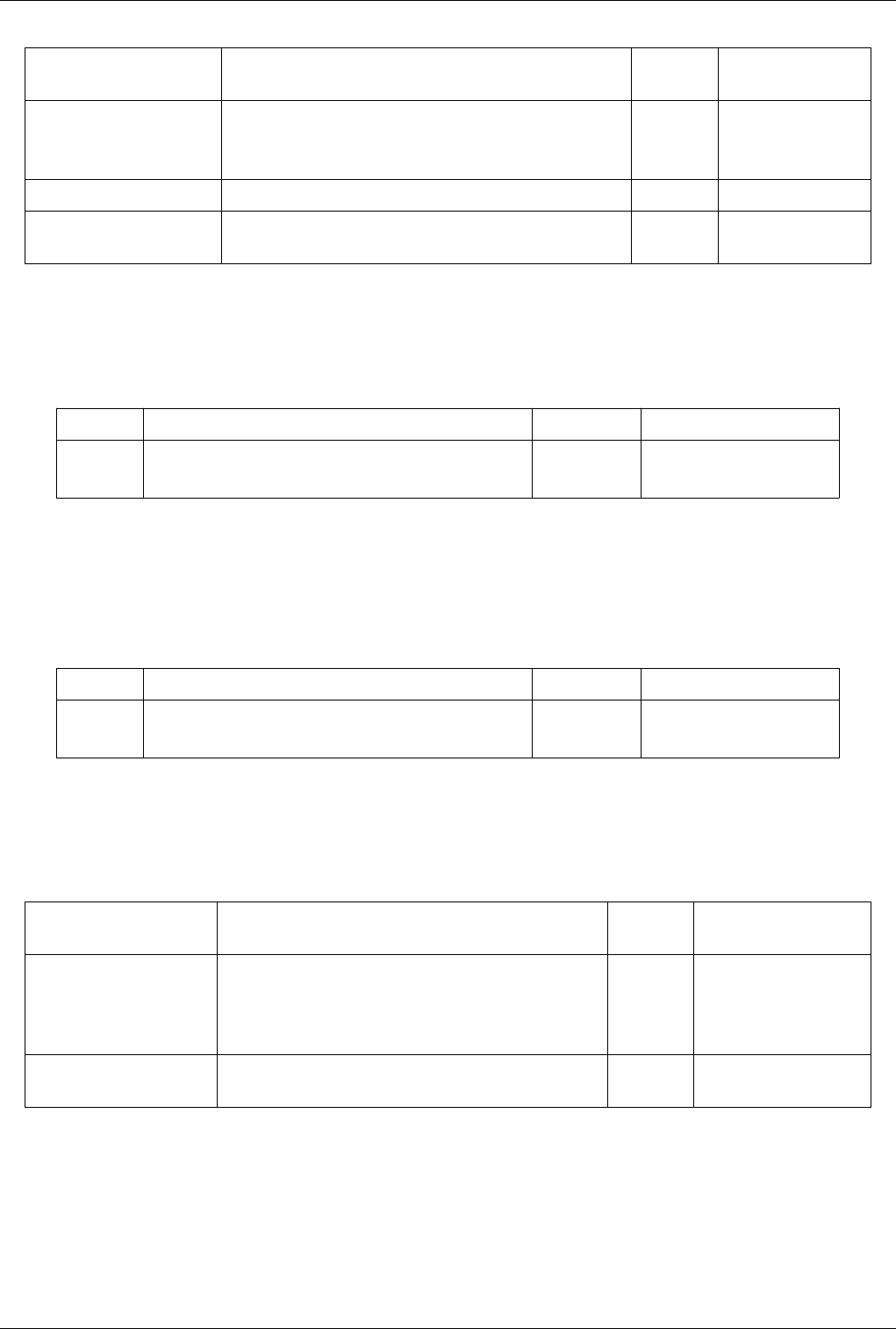

Figure 4.24 Display Properties within the Object

Inspector

• Attribute Mode: pick which attribute arrays to plot i.e. point arrays, cell arrays, etc.

• X Axis Data: controls the array to use as the X axis.

• Use Array Index From Y Axis Data: when checked, results in ParaView using the index in data-array are

plotted on Y as the X axis.

• Use Data Array: when checked the user can pick an array to be interpreted as the X coordinate.

• Line Series: controls the properties of each of the arrays plotted along the Y axis.

• Variable: check the variable to be plotted.

• Legend Name: click to change the name used in the legend for this array.

Select any of the series in the list to change following properties for that series. You can select multiple entries to

change multiple series.

Views, Representations and Color Mapping 52

• Line Color: controls the color for the series.

• Line Thickness: controls the thickness for the series.

• Line Style: controls the style for the line.

• Marker Style: controls the style used for those markers, which can be placed at every data point.

Bar Chart View

Traditional 2D graphs present some types of information much more readily than 3D renderings do; they are usually

the best choice for displaying one and two dimensional data. The bar chart view is very useful for examining the

relative quantities of different values within data, for example.

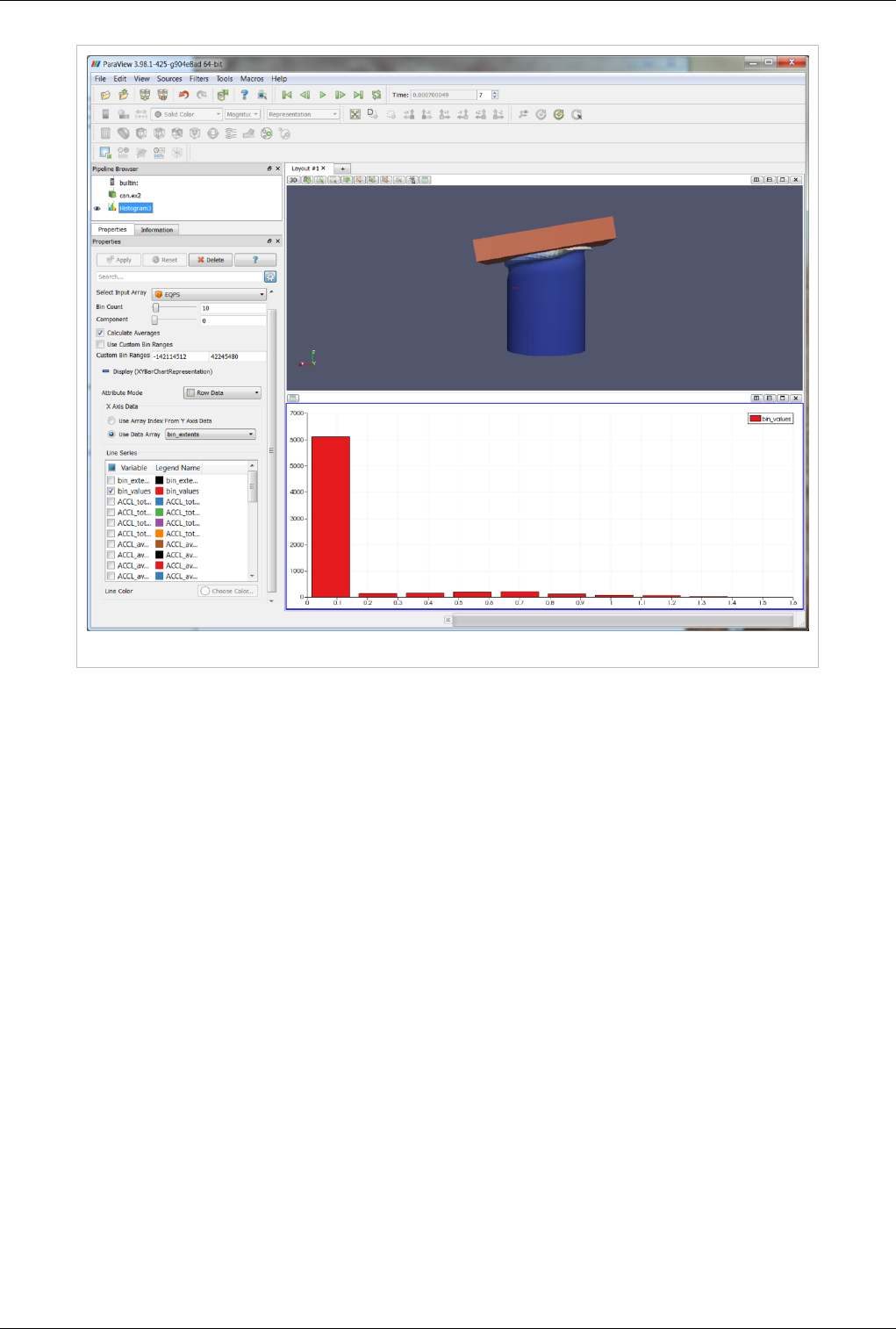

The bar chart view is used most frequently to display the output of the histogram filter. This filter divides the range

of a component of a specified array from the input data set into a specified number of bins, producing a simple

sequence of the number of values in the range of each bin. A bar chart is the natural choice for displaying this type of

data. In fact, the bar chart view is the preferred view type for the histogram filter. Filters that have a preferred view

type will create a view of the preferred type whenever they are instantiated.

When the new view is created for the histogram filter, the pre-existing 3D view is made smaller to make space for

the new chart view. The chart view then becomes the active view, which is denoted with a red border around the

view in the display area. Clicking on any view window makes it the active view. The contents of the Object

Inspector and Pipeline Browser panels change and menu items are enabled or disabled whenever a different view

becomes active to reflect the active view’s settings and available controls. In this way, you can independently control

numerous views. Simply make a view active, and then use the rest of the GUI to change it. By default, the changes

you make will only affect the active view.

As with the 3D View, the visibility of different datasets within a bar chart view is displayed and controlled by the

eye icons in the Pipeline Browser. The bar chart view can only display datasets that contain chartable data, and when

a bar chart view is active, the Pipeline Browser will only display the eye icon next to those datasets that can be

charted.

ParaView stores its chartable data in 1D Rectilinear Grids, where the X locations of the grid contain the bin

boundaries, and the cell data contain the counts within each bin. Any source or filter that produces data in this format

can be displayed in the bar chart view. Figure 4.25 shows a histogram of the values from a slice of a data set.

The Edit View Options for chart views dialog allows you to create labels, titles, and legends for the chart and to

control the range and scaling of each axis.

The Interaction, Display Properties as well as View Settings for this view and similar to those for the Line Chart.

Views, Representations and Color Mapping 53

Figure 4.25 Histogram of values from a slice of a dataset

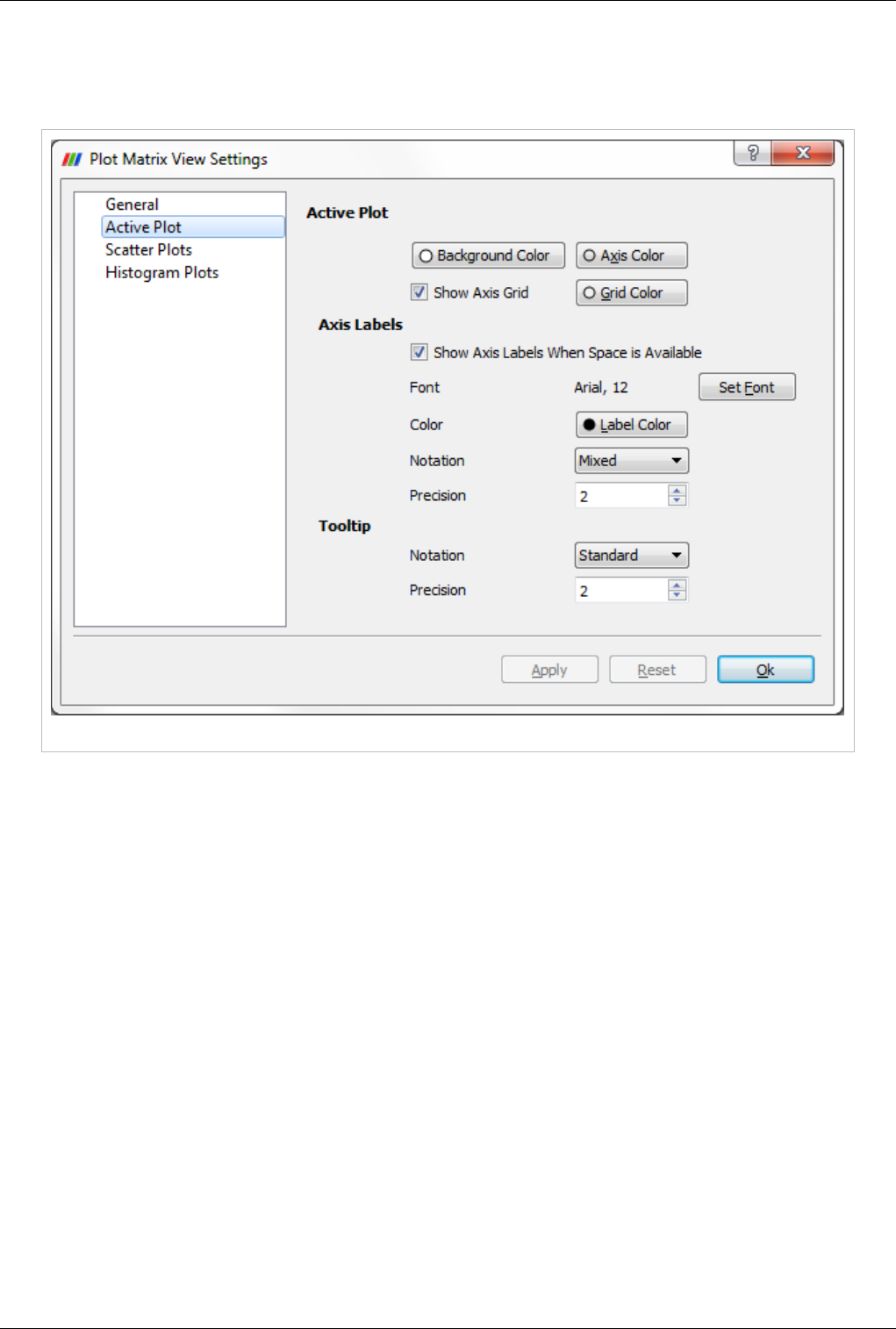

Plot Matrix View

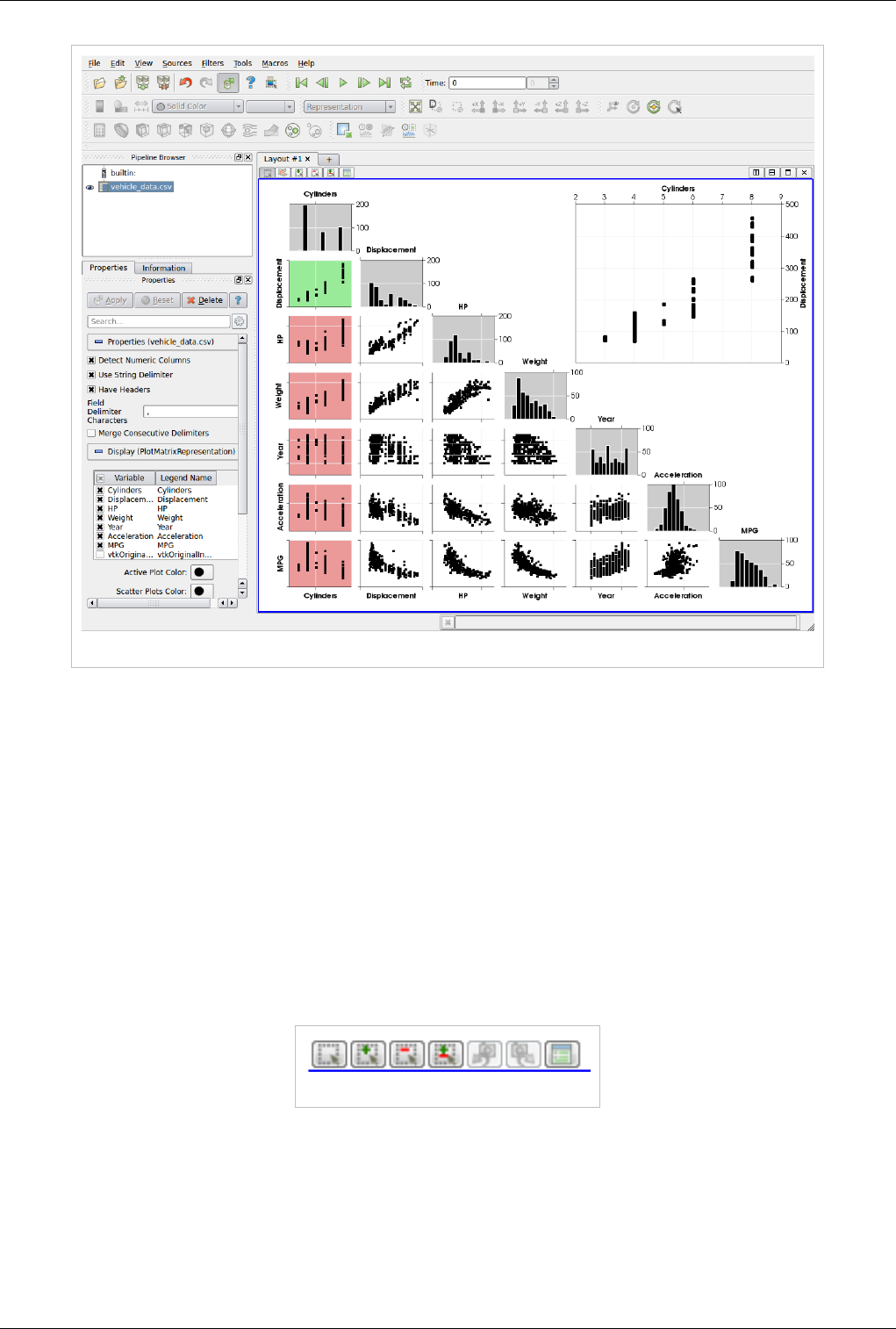

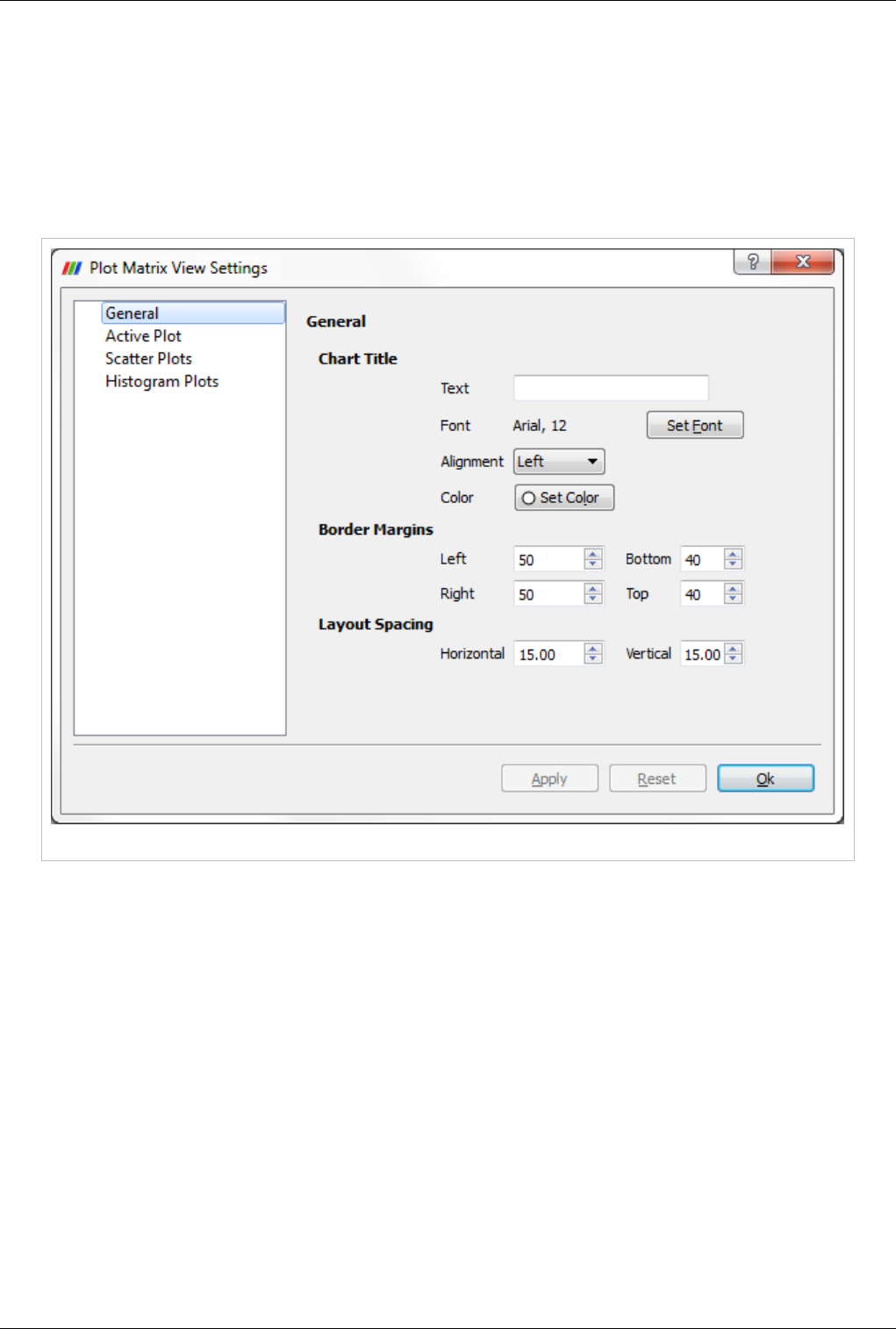

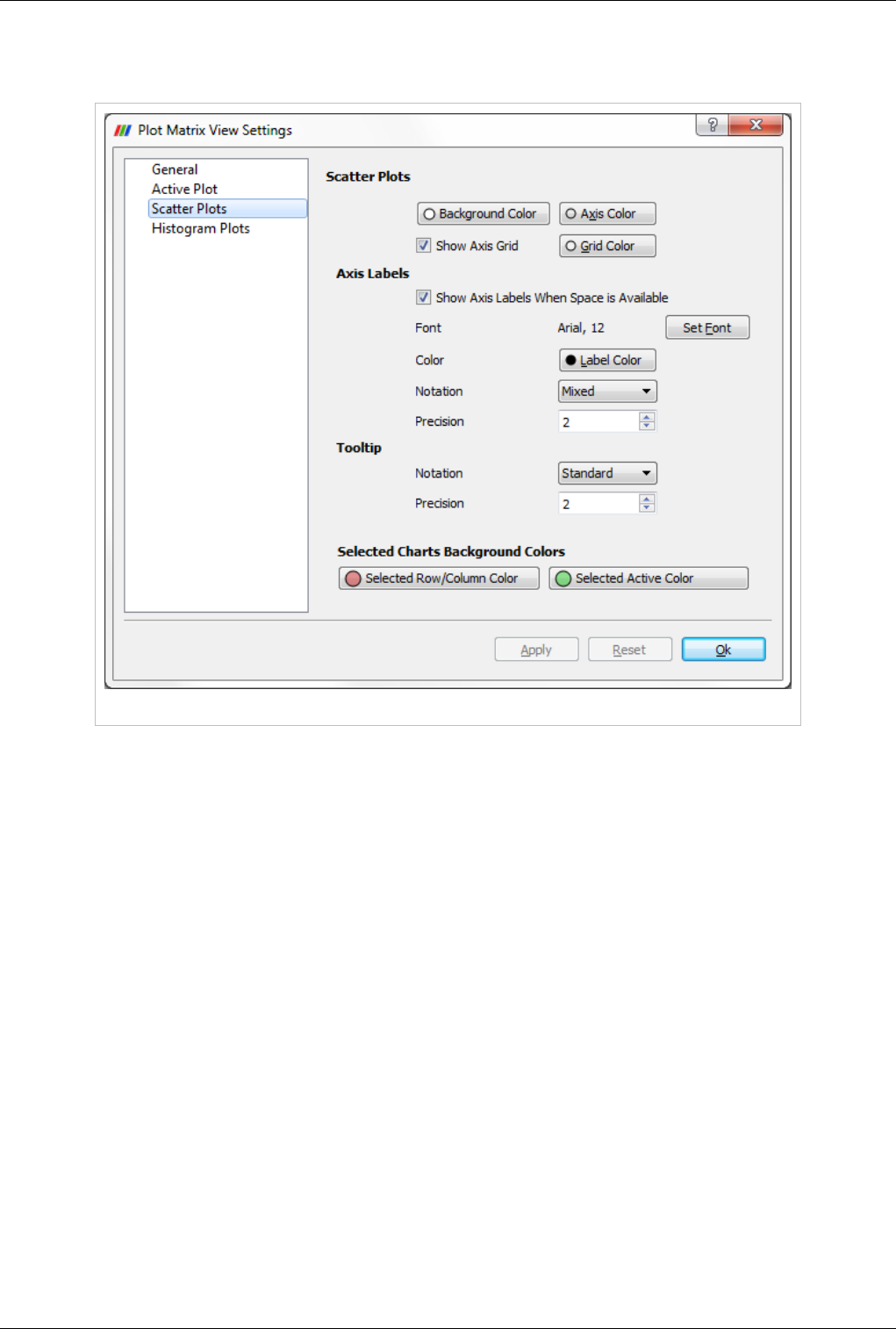

The new Plot-Matrix-View (PMV) allows visualization of multiple dimensions of your data in one compact form. It

also allows you to spot patterns in the small scatter plots, change focus to those plots of interest and perform basic

selection. It is still at an early stage, but the basic features should already be useable, including iterative selection for

all charts (add, remove and toggle selections with Ctrl or Shift modifiers on mouse actions too).

The PMV can be used to manage the array of plots and the vtkTable mapping of columns to input of the charts. Any

filters or sources with an output of vtkTable type should be able to use the view type to display their output. The

PMV include a scatter plot, which consists of charts generated by plotting all vtkTable columns against each other,

bar charts (histograms) of vtkTable columns, and an active plot which shows the active chart that is selected in the

scatter plot. The view offer offers new selection interactions to the charts, which will be describe below in details.

As with the other view types, what is displayed in the active PMV is displayed by and controllable with the eye icons

in the Pipeline Browser panel. Like XY chart views, the PMVs are also client-side views i.e. they deliver the data to

be plotted to the client.

Views, Representations and Color Mapping 54

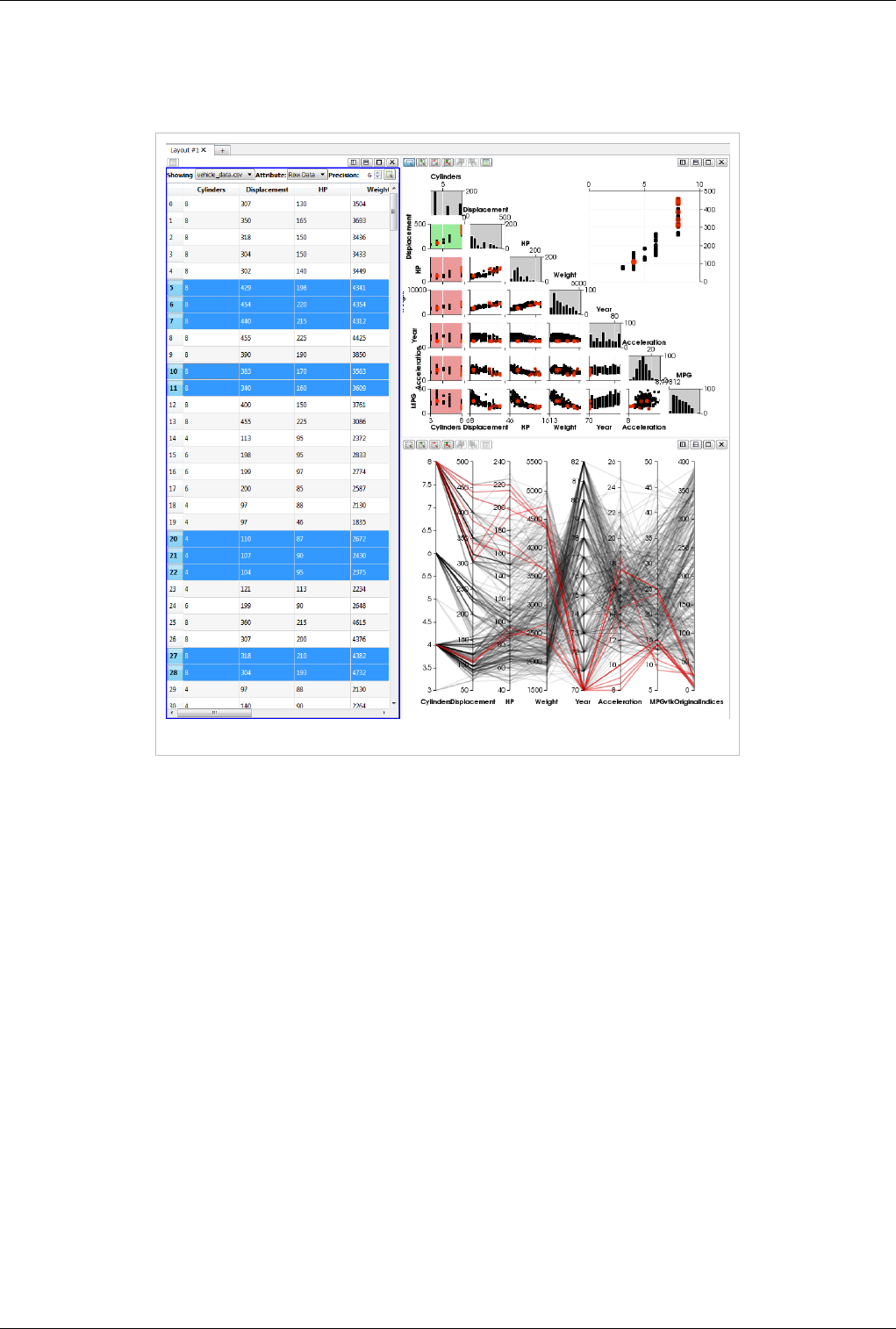

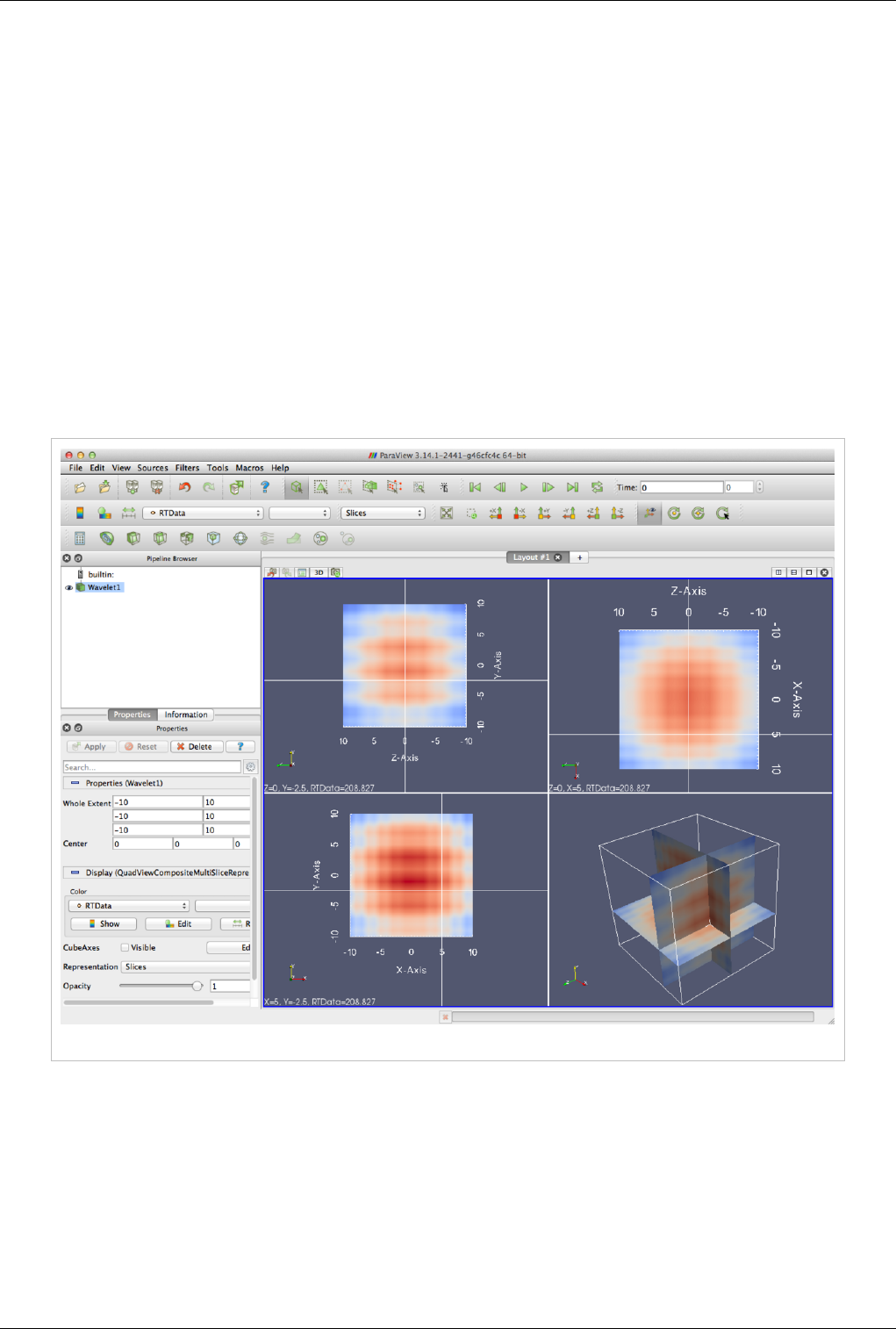

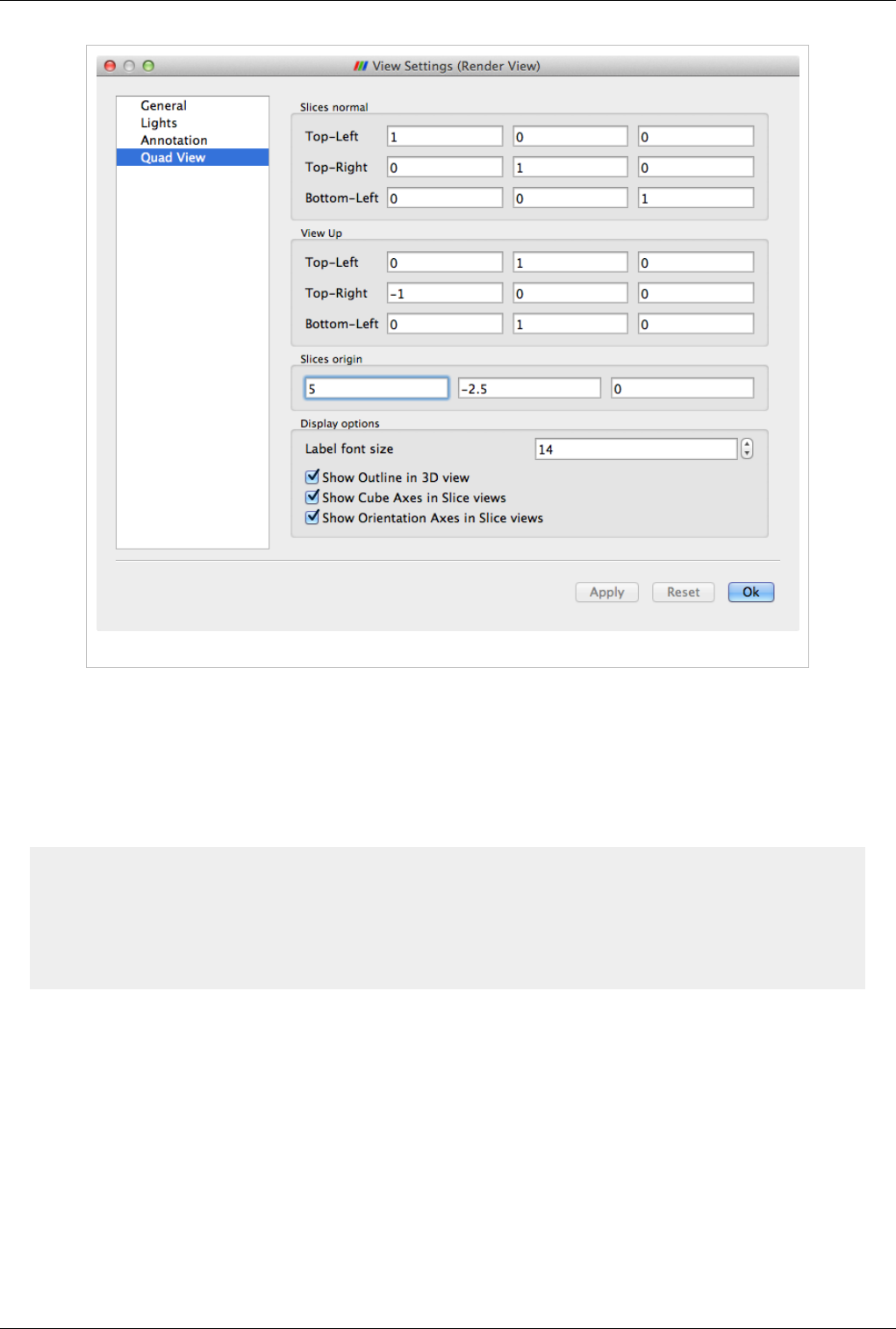

Plot Matrix View Plots of data values in a vtkTable

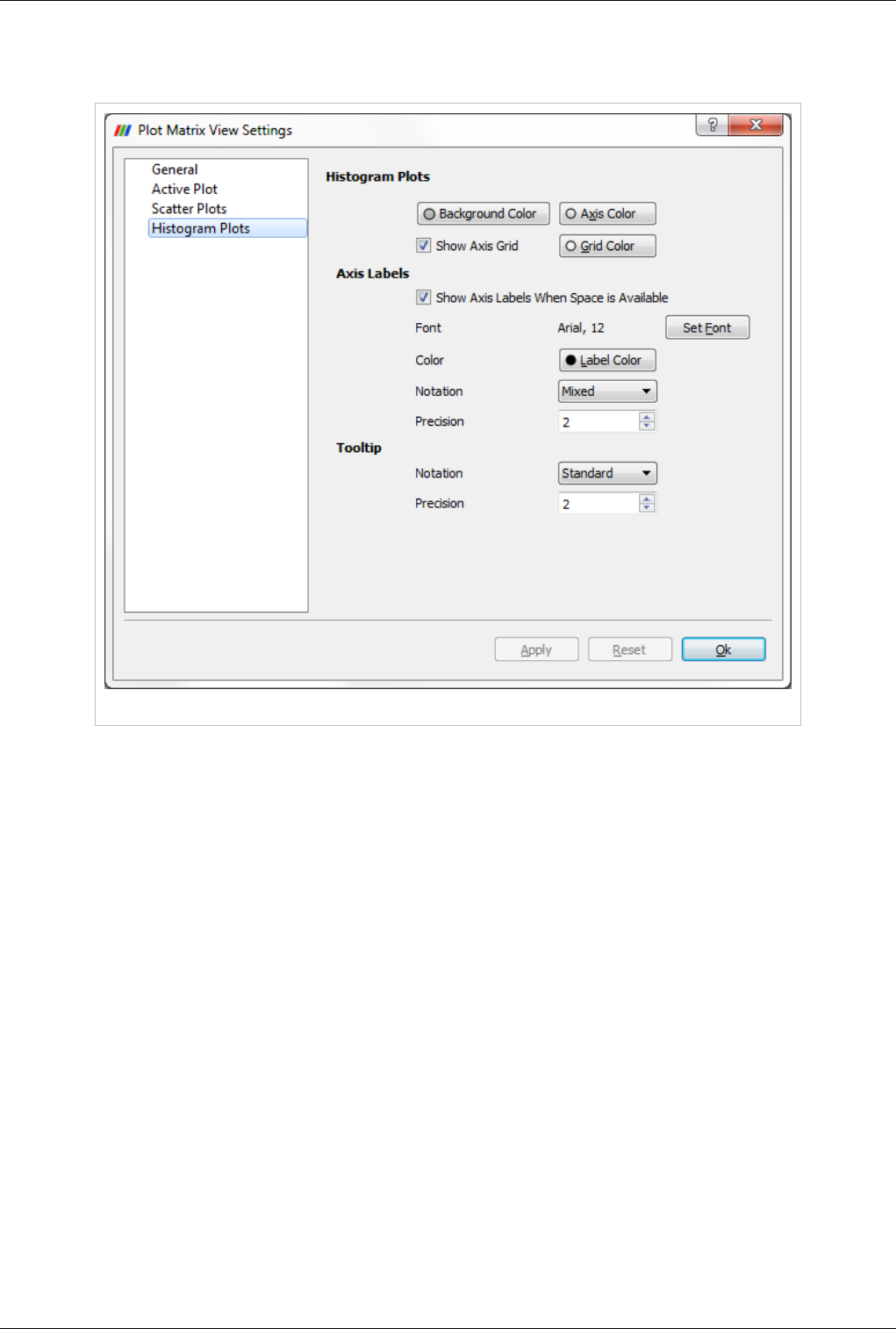

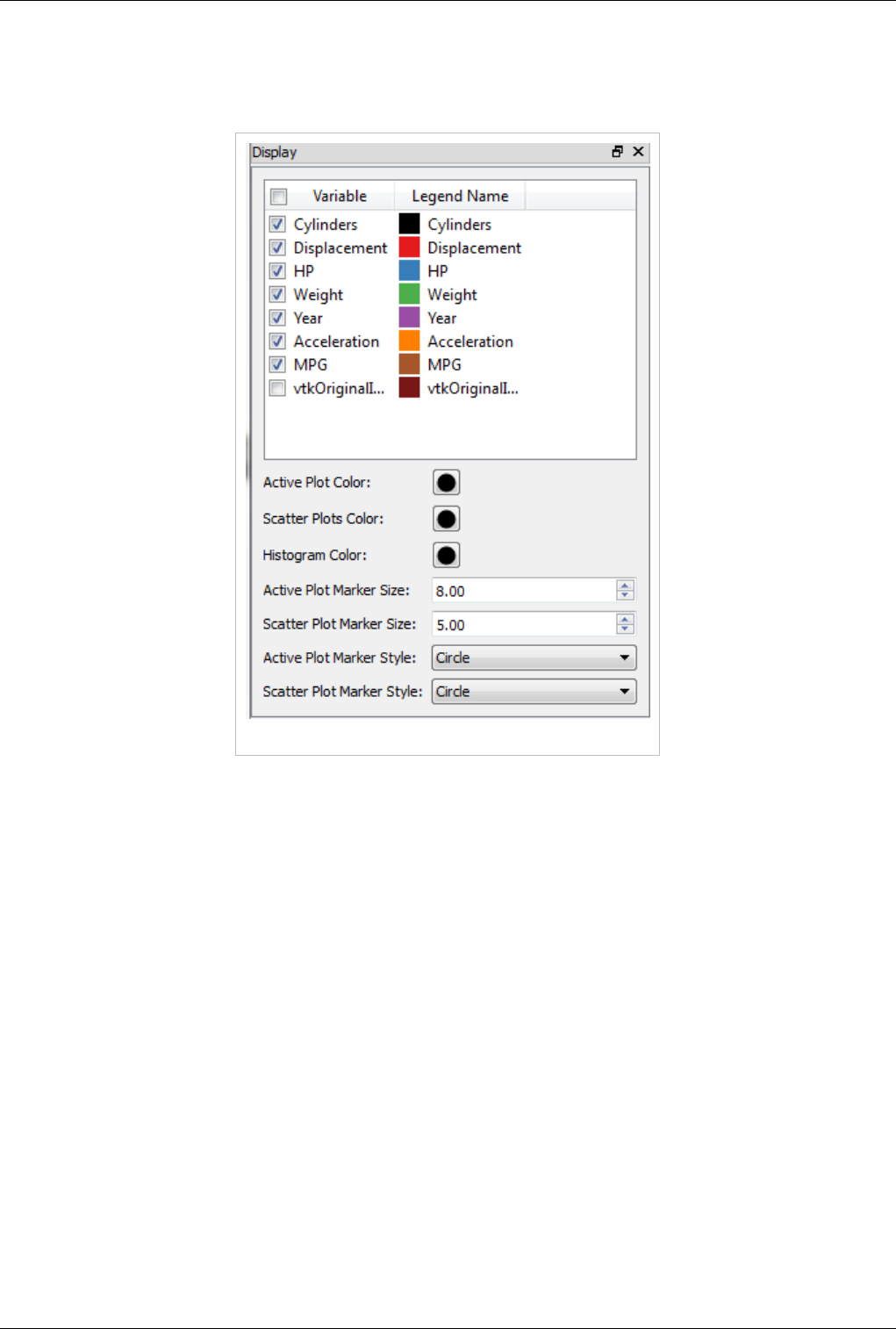

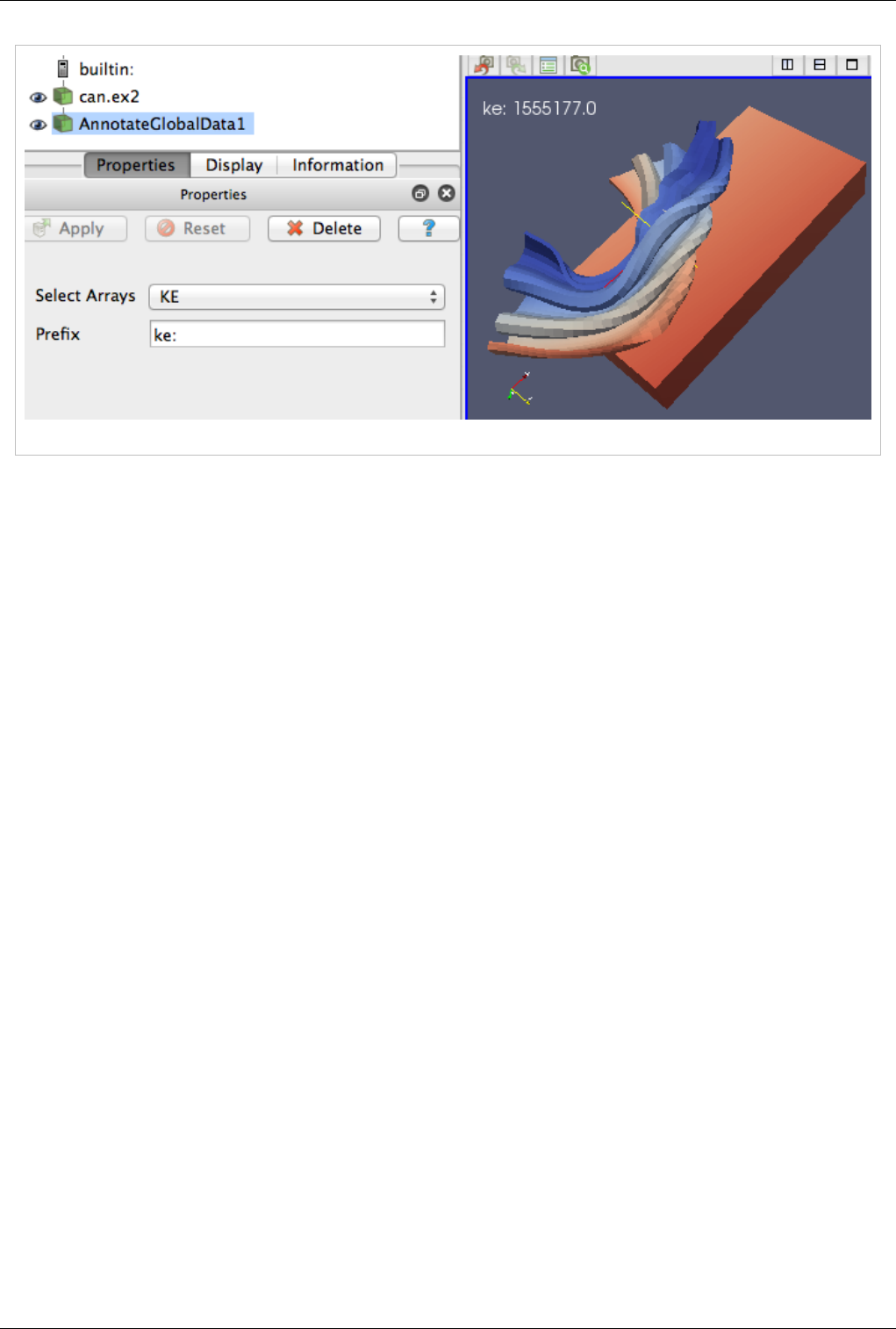

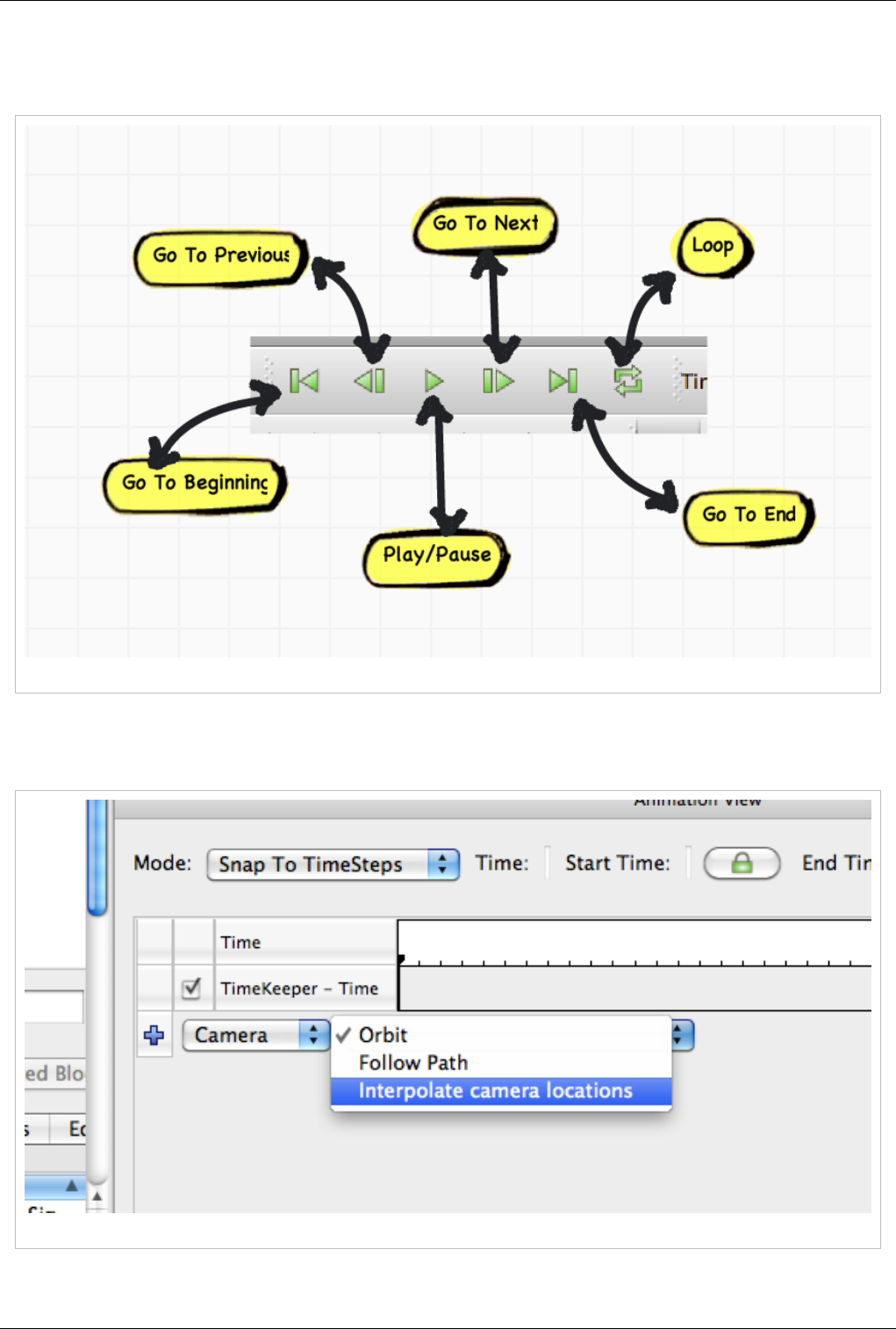

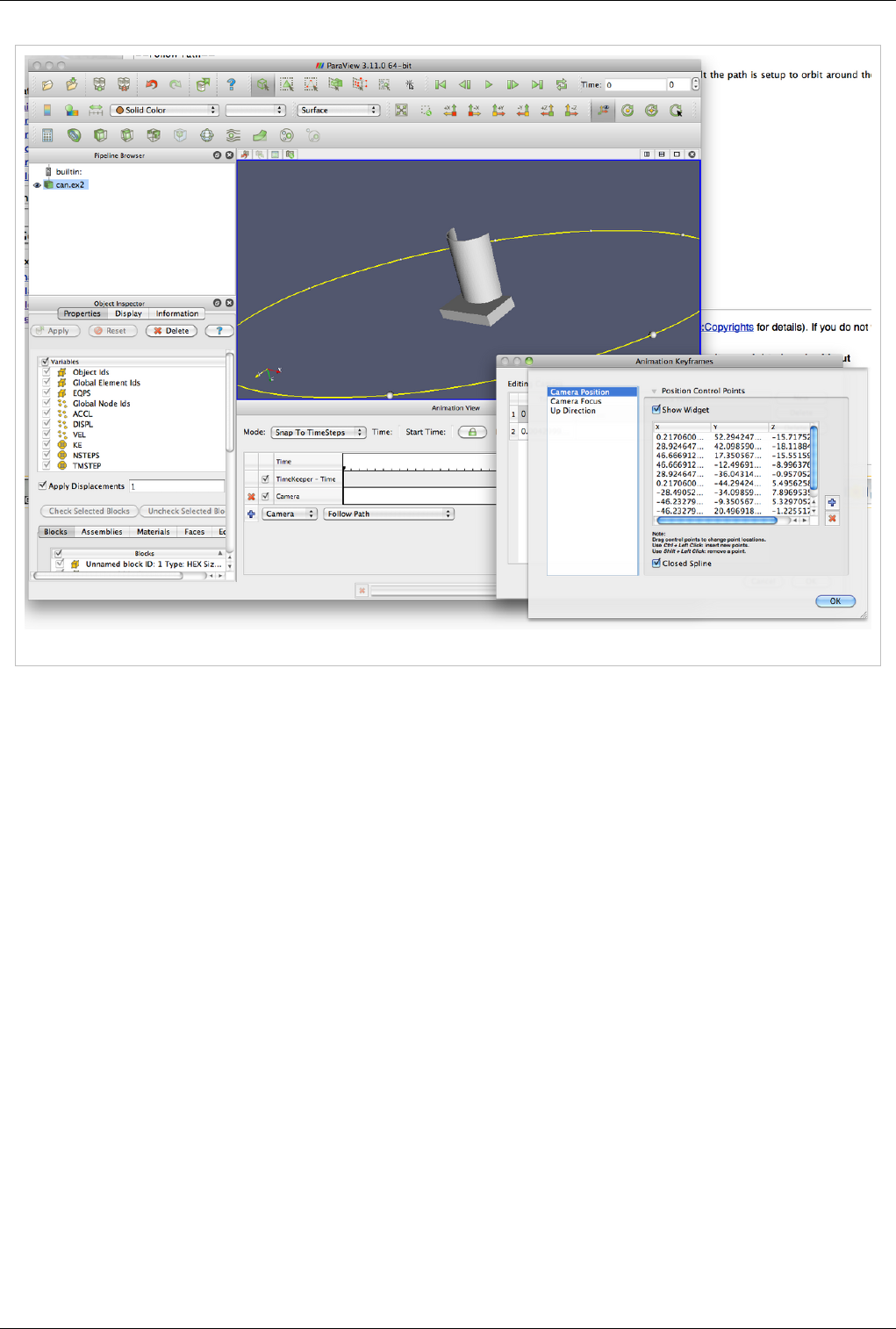

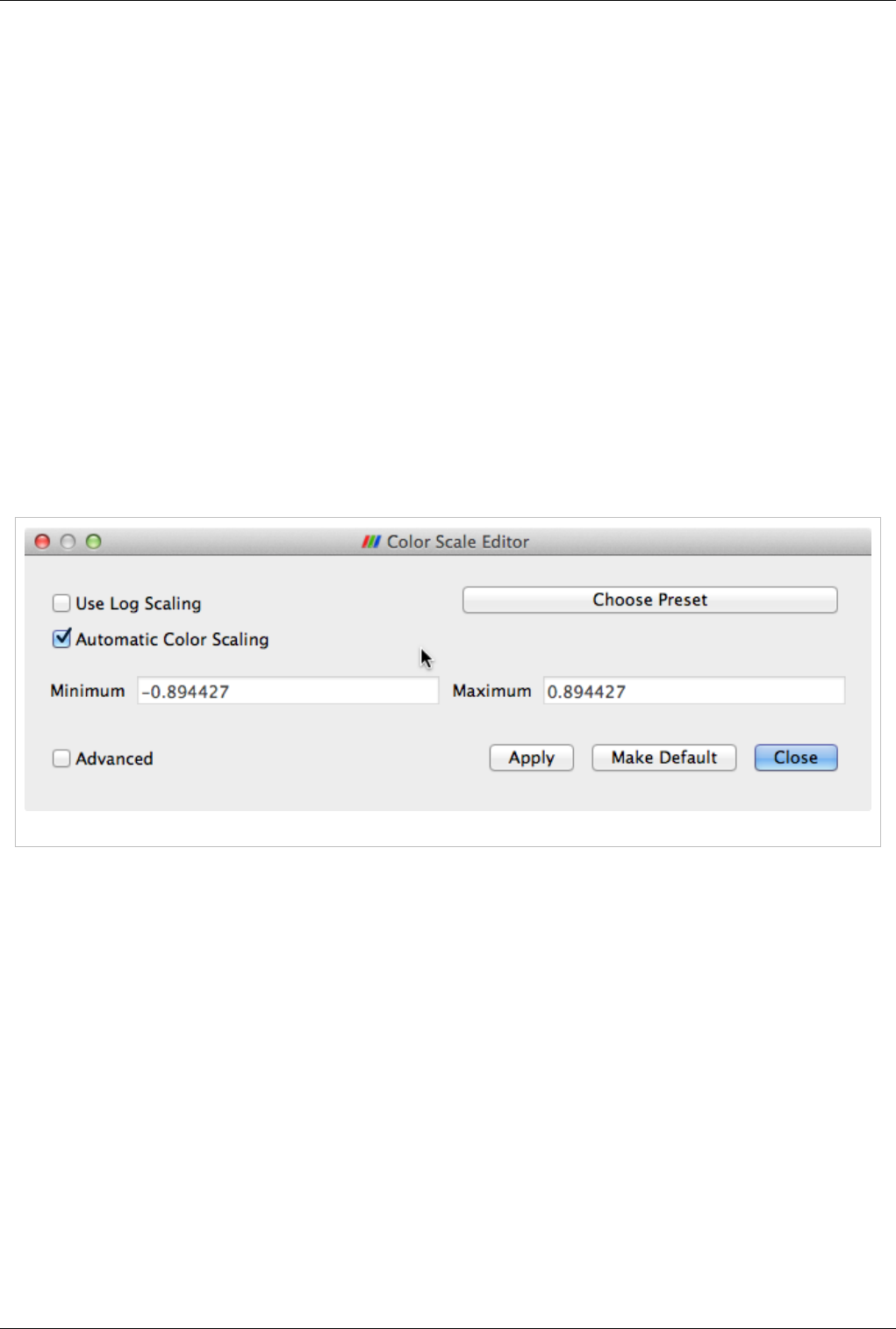

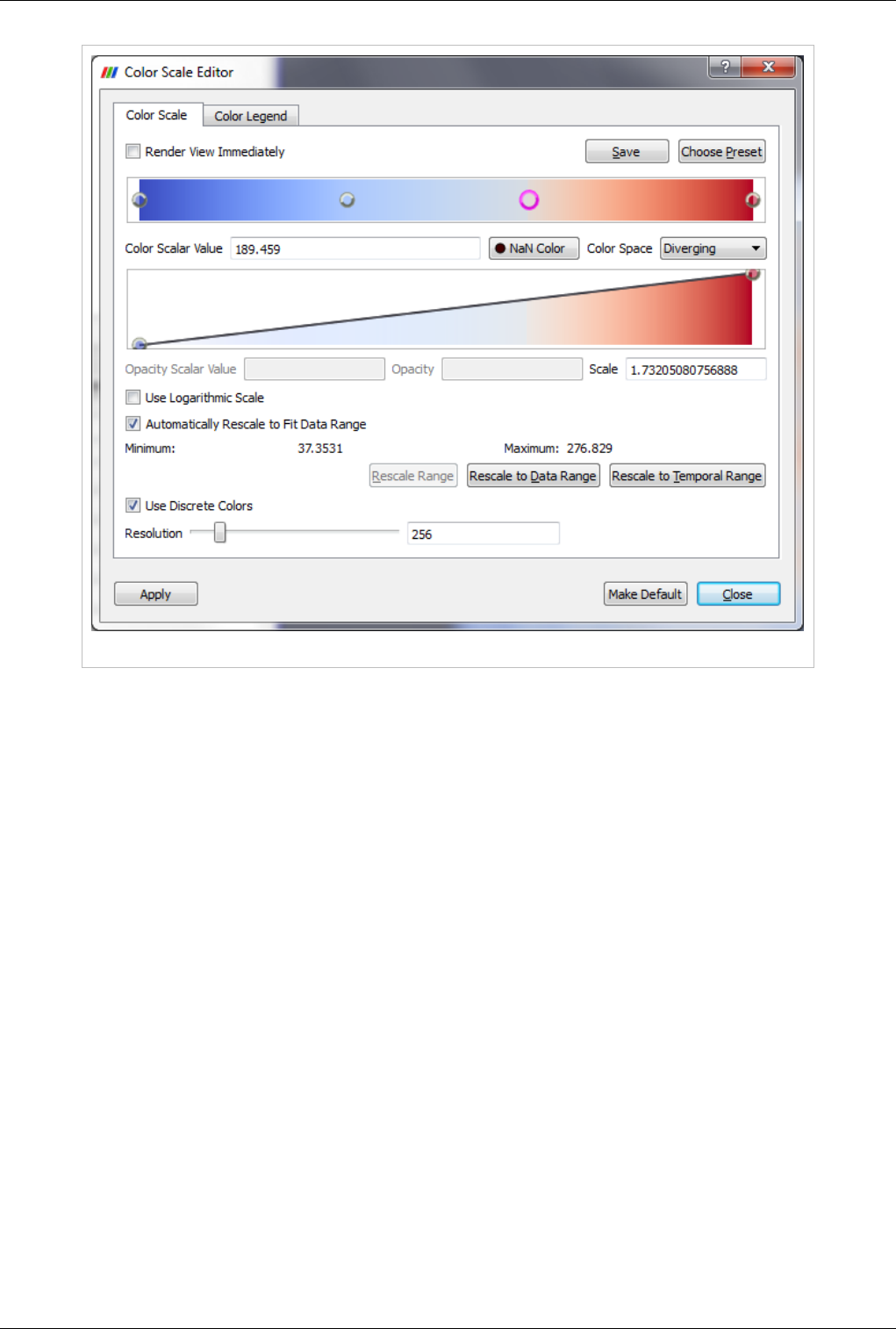

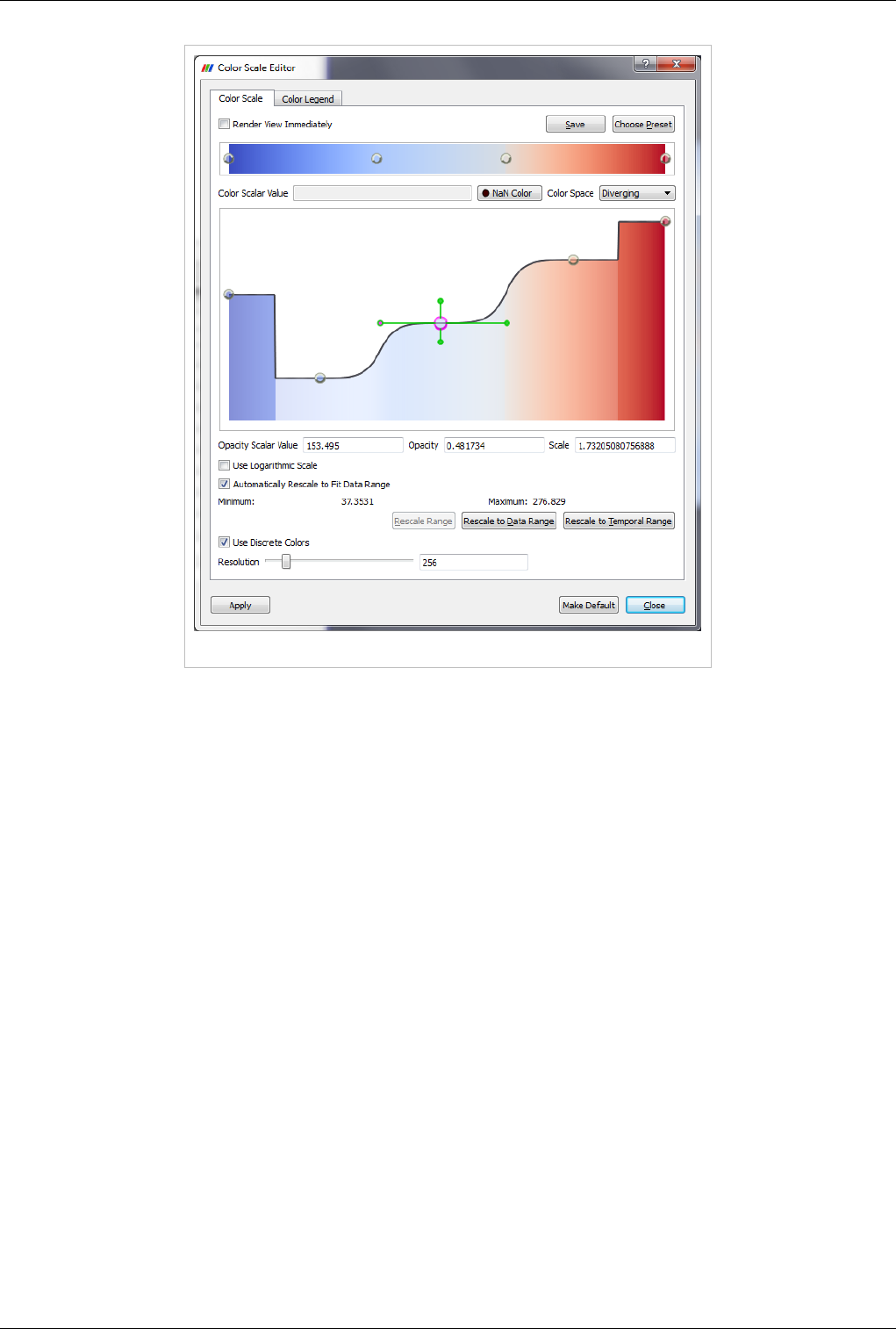

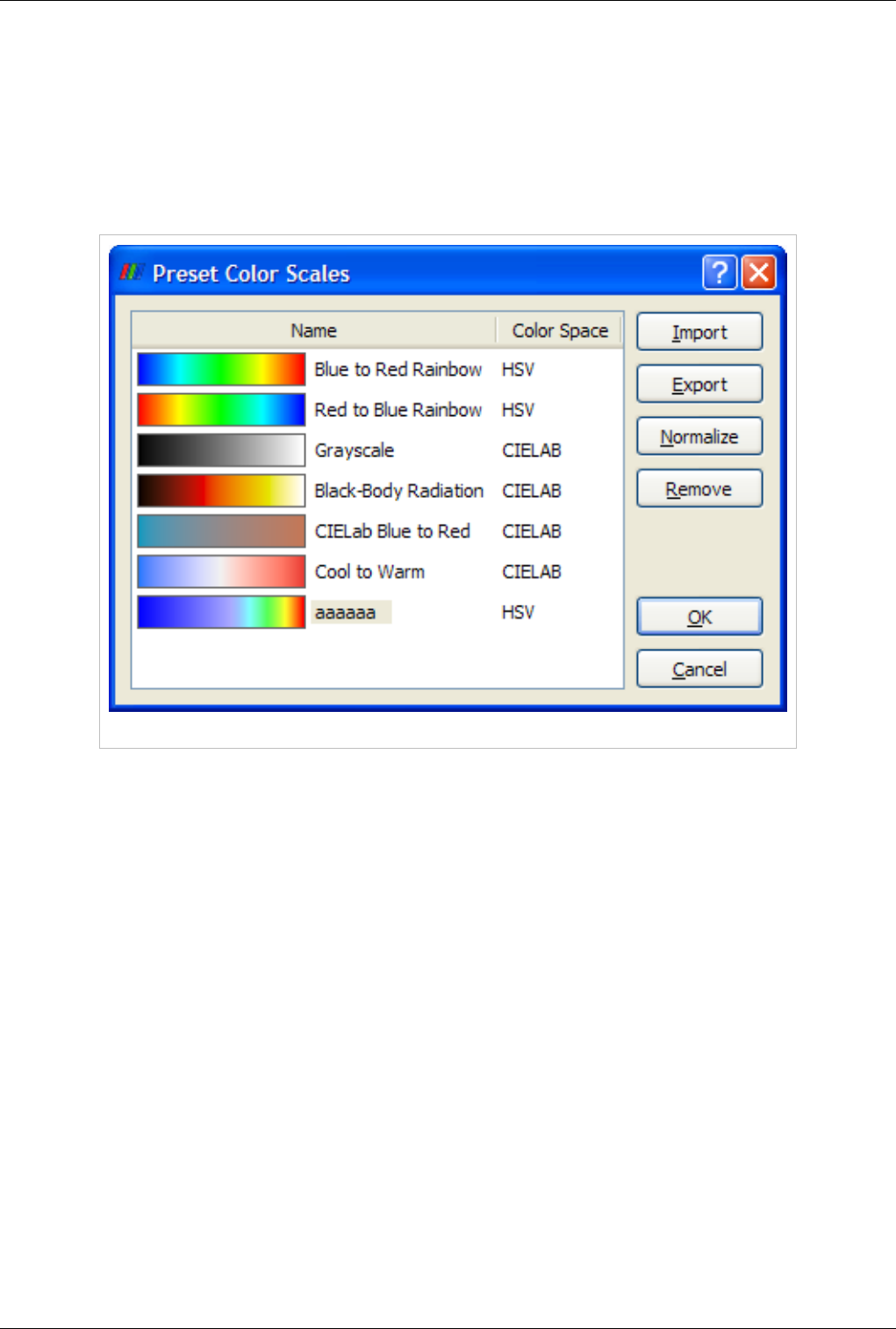

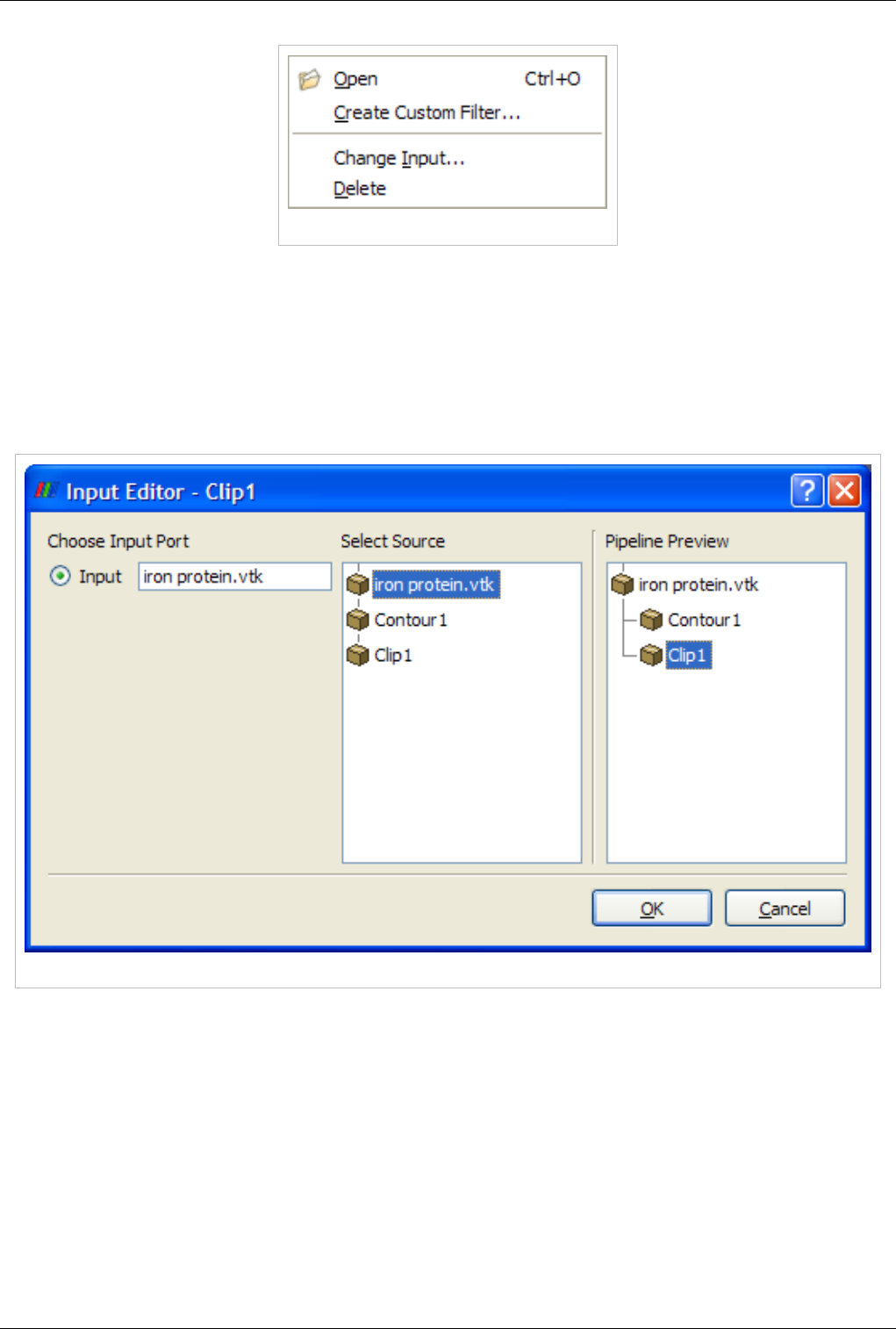

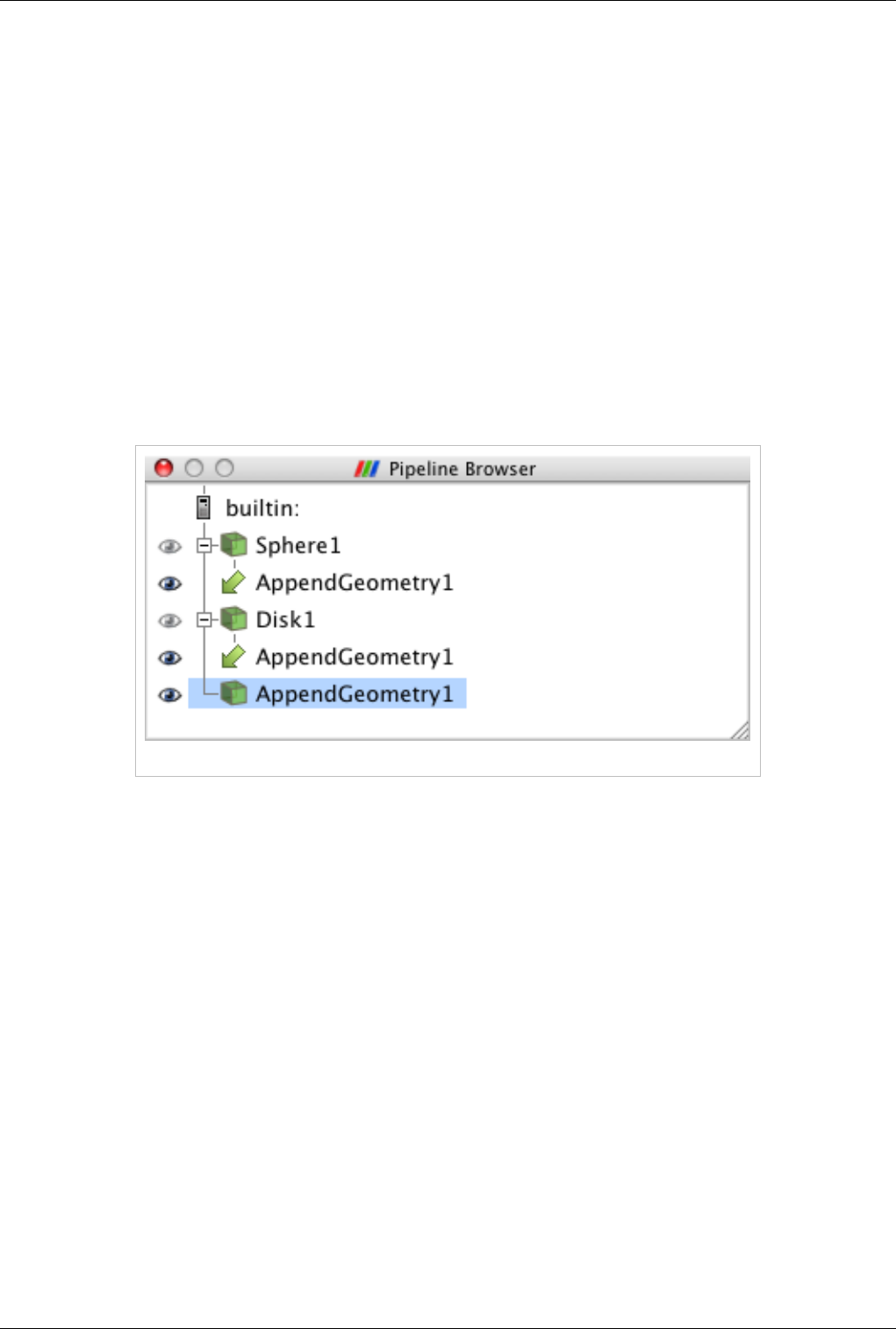

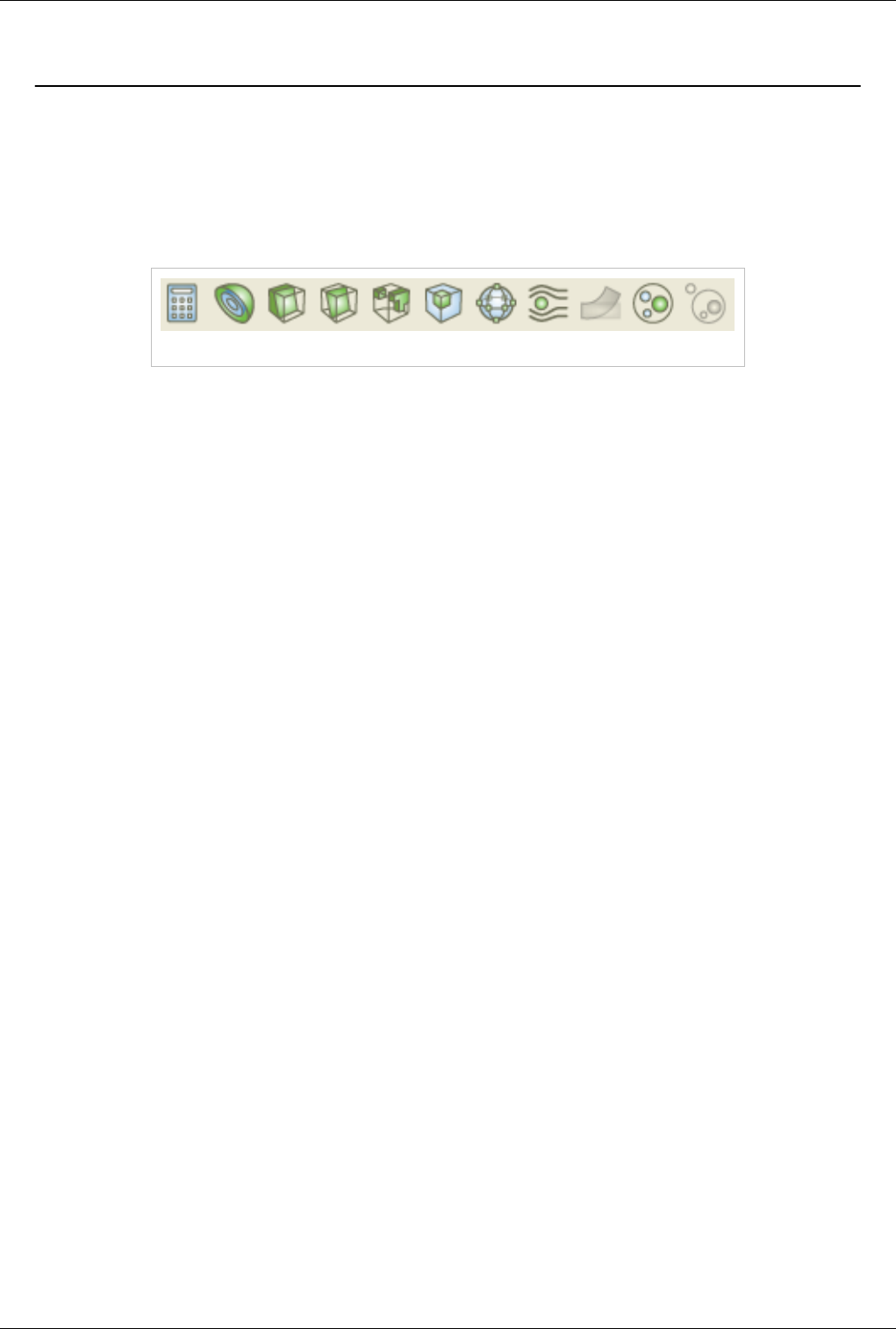

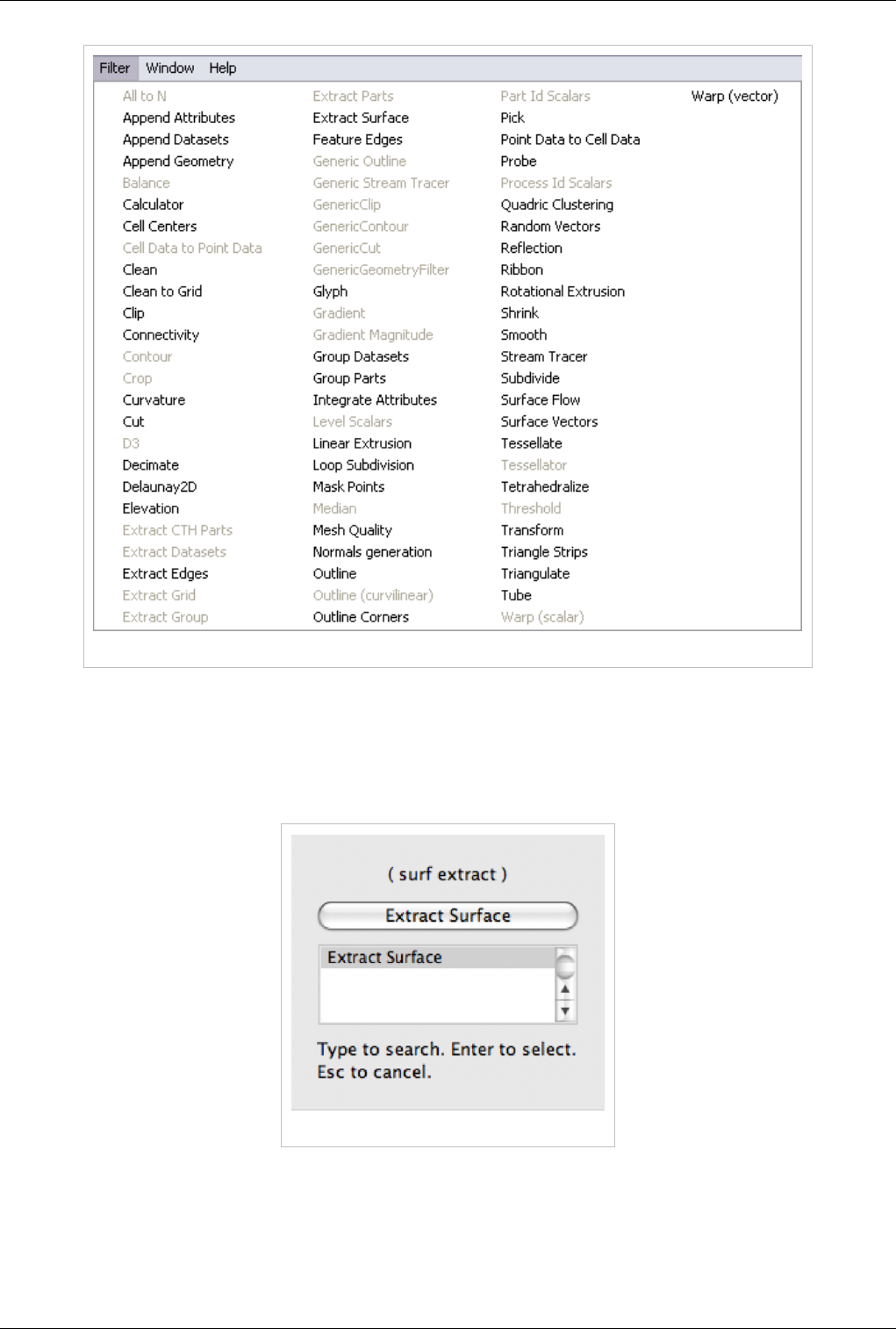

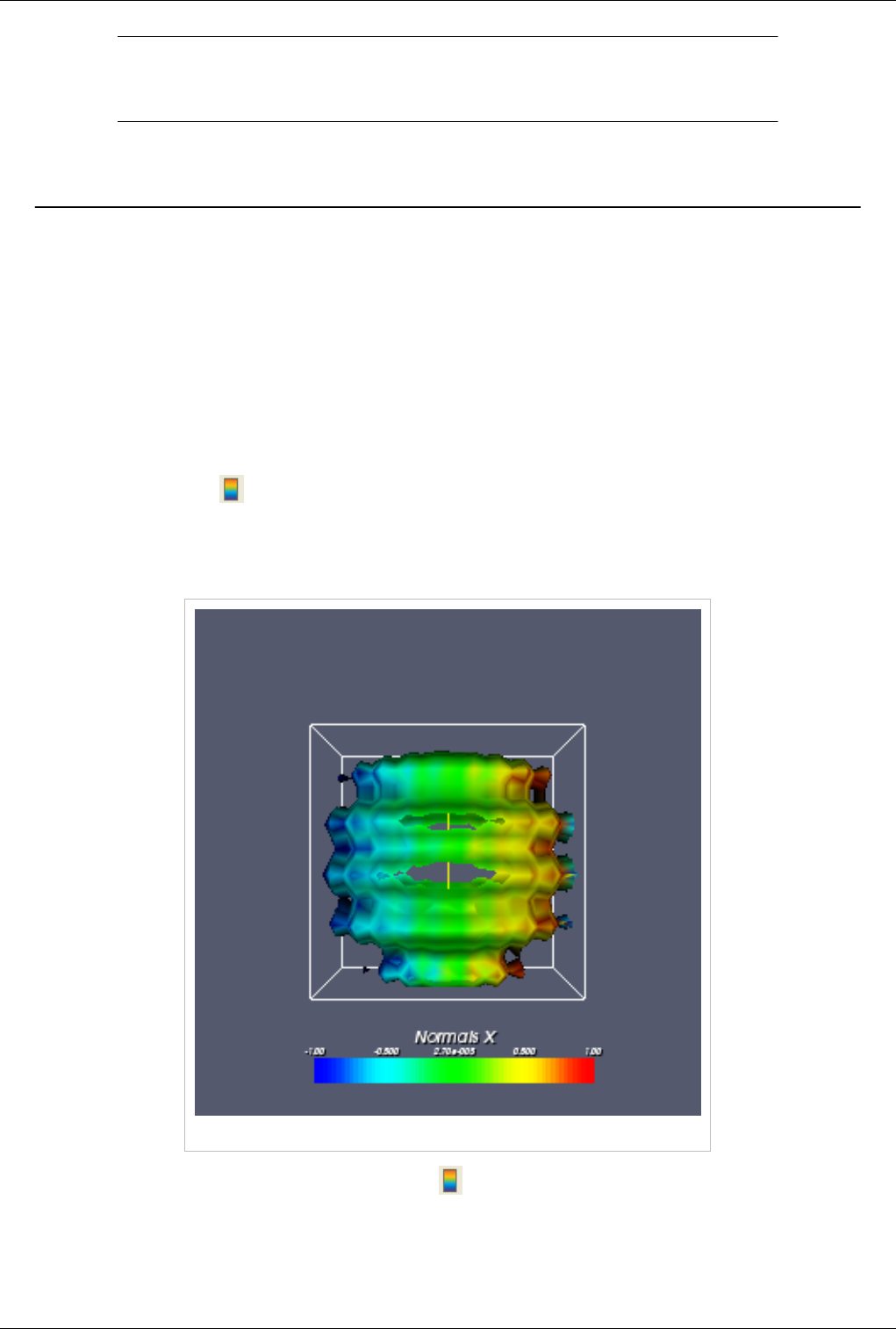

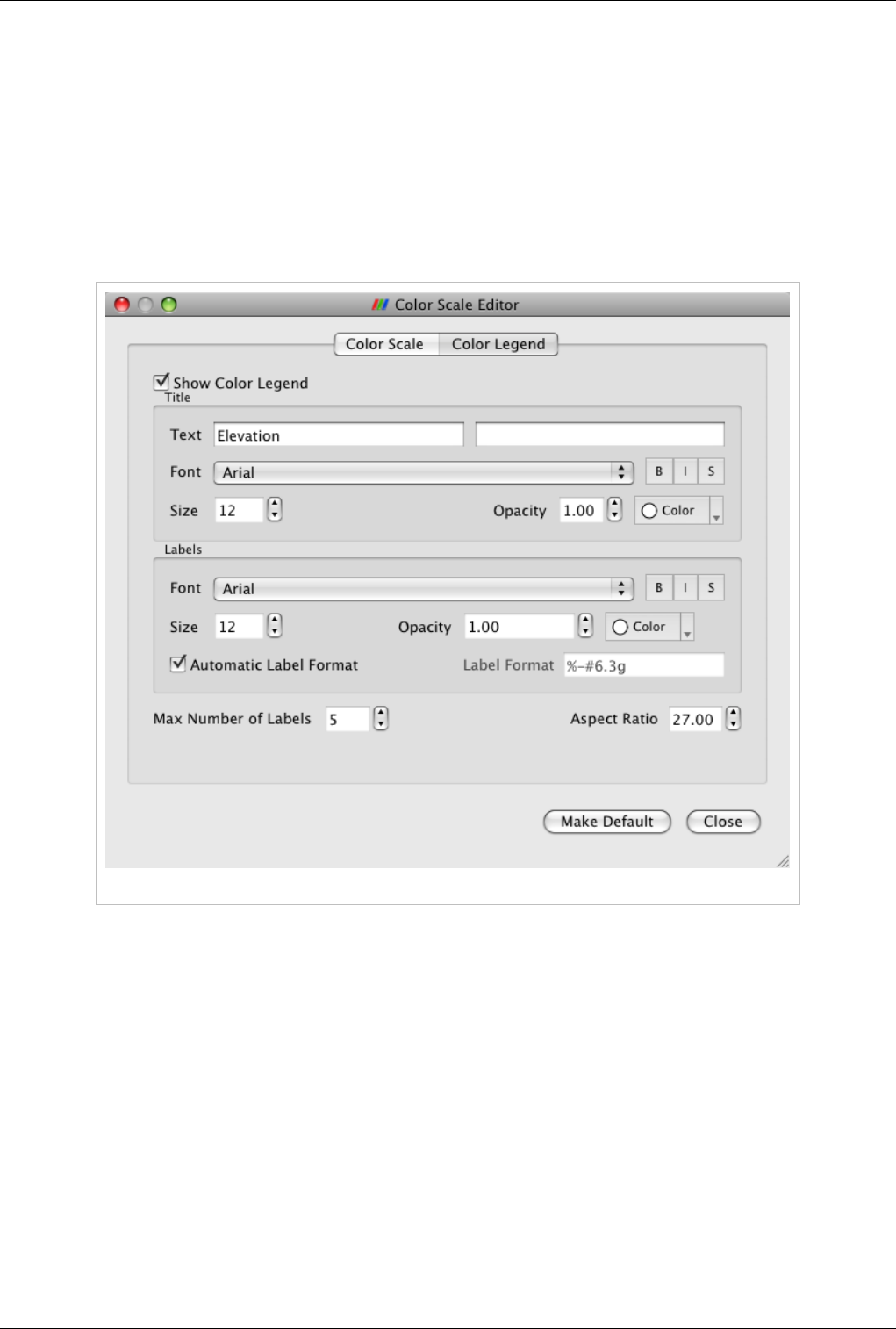

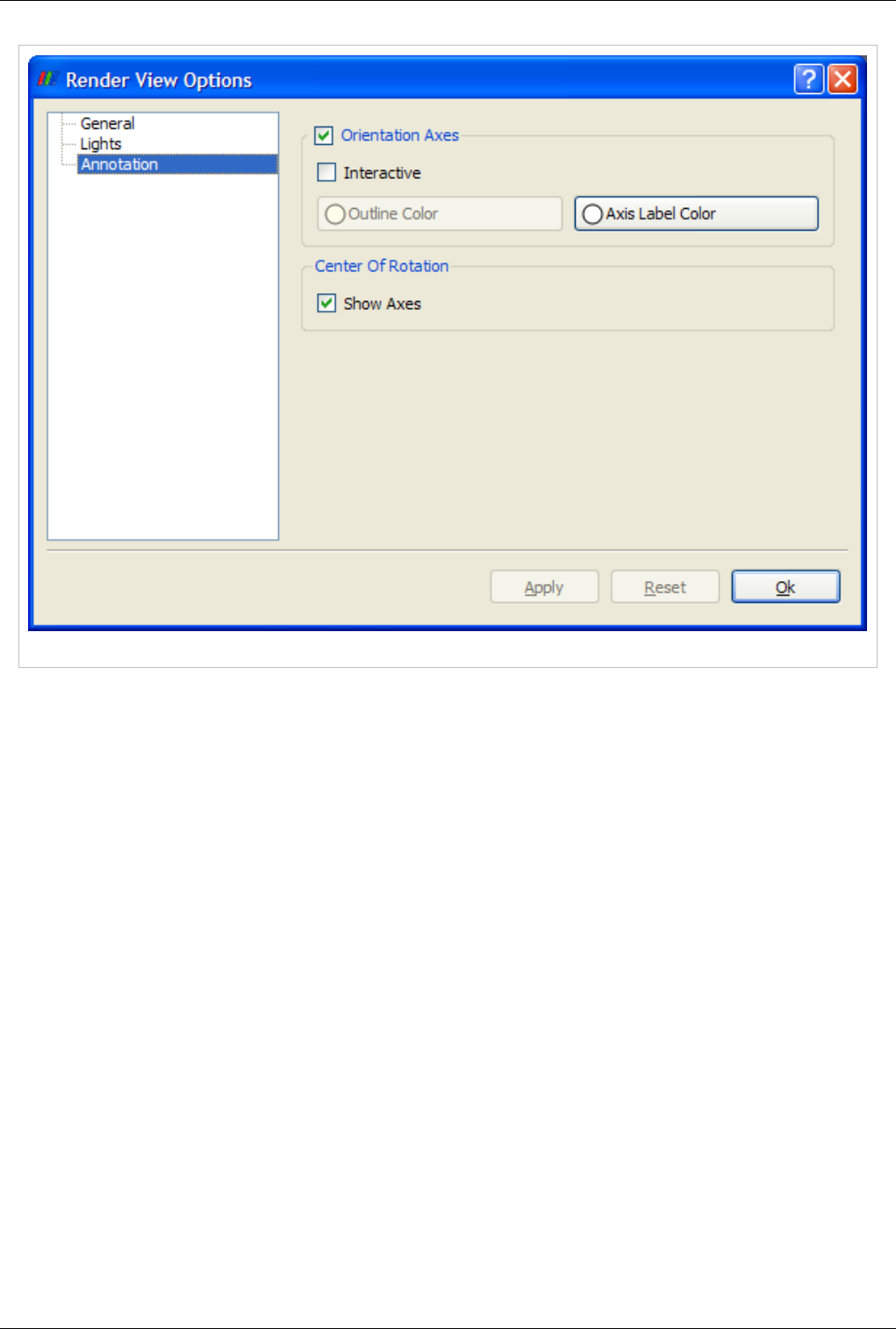

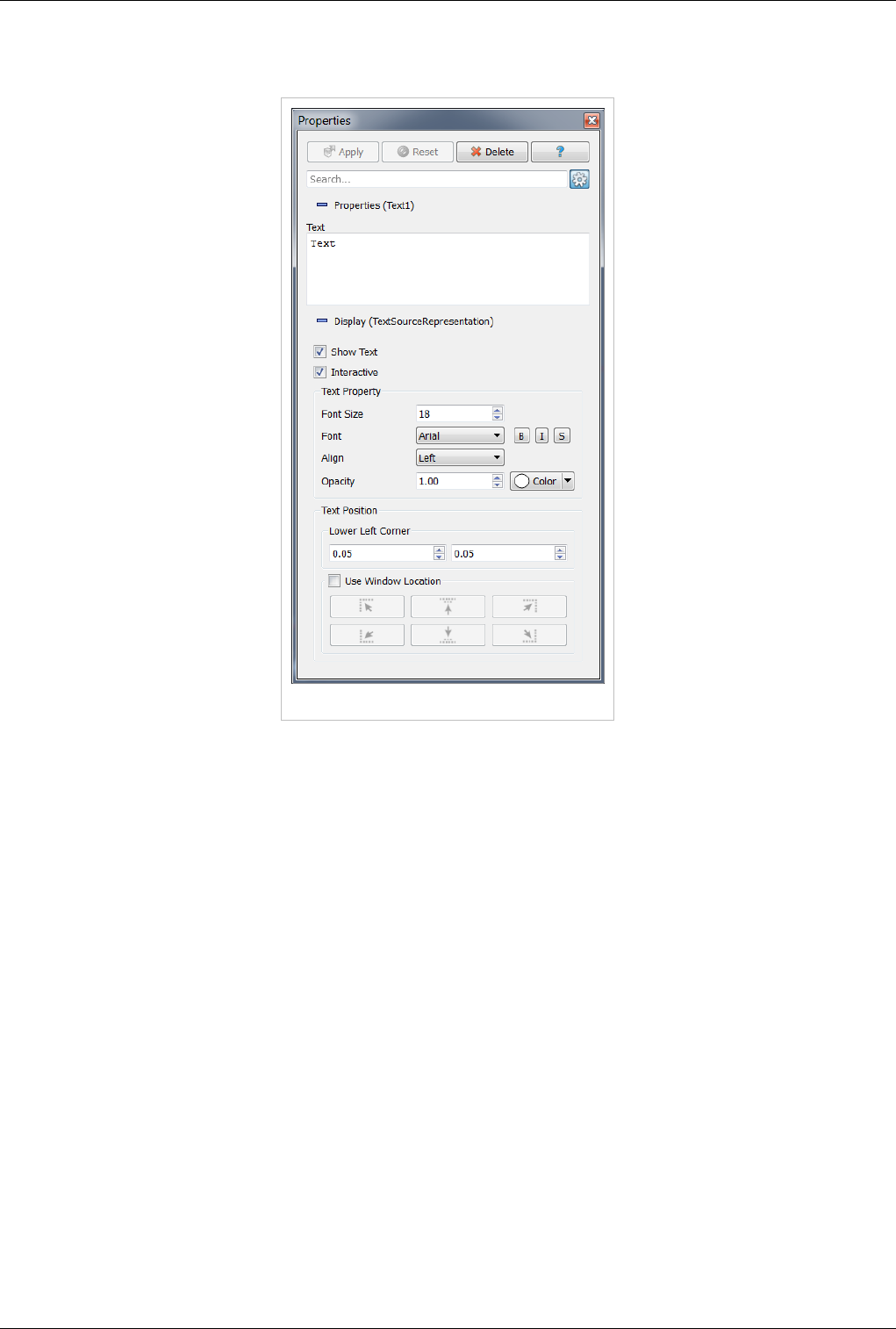

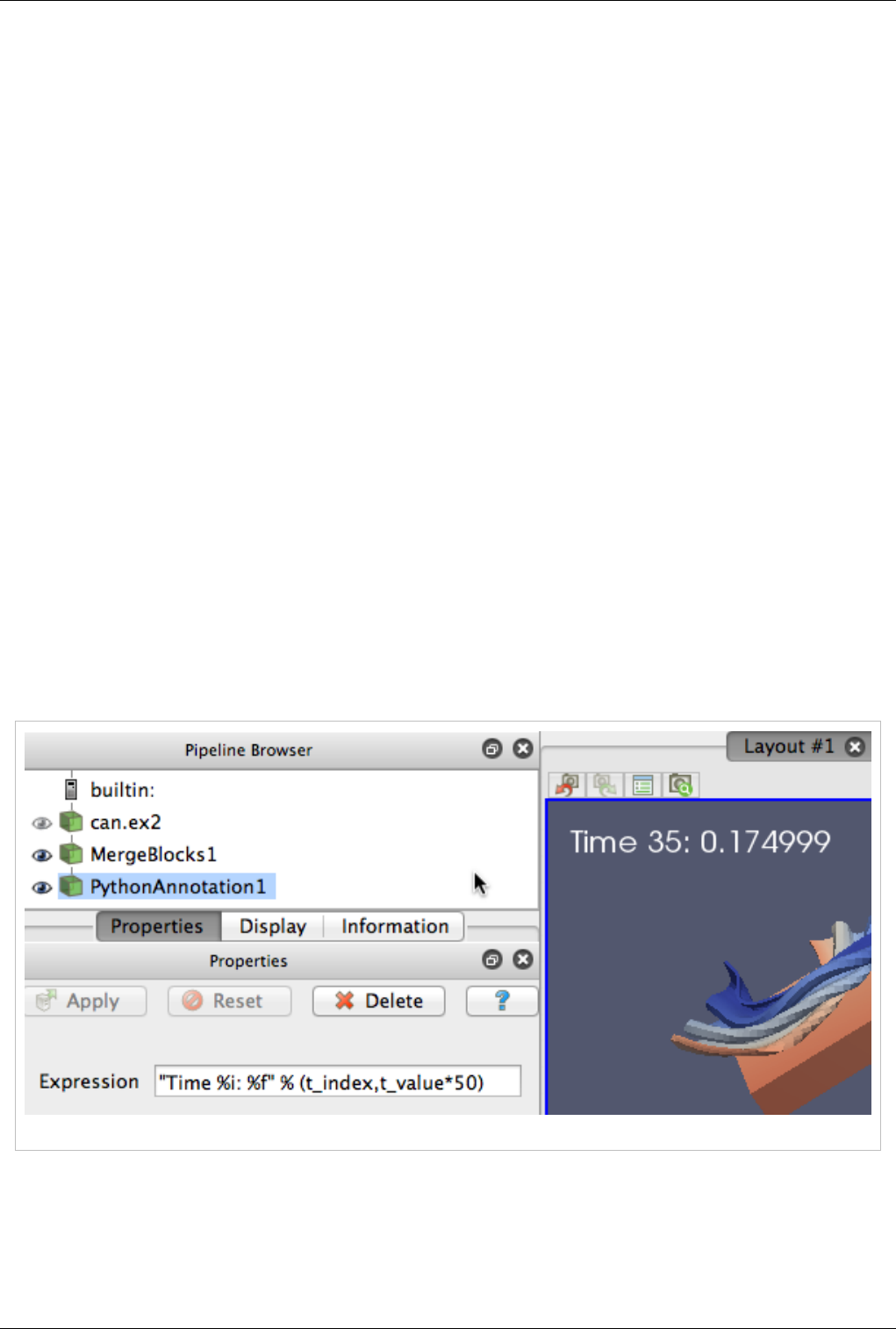

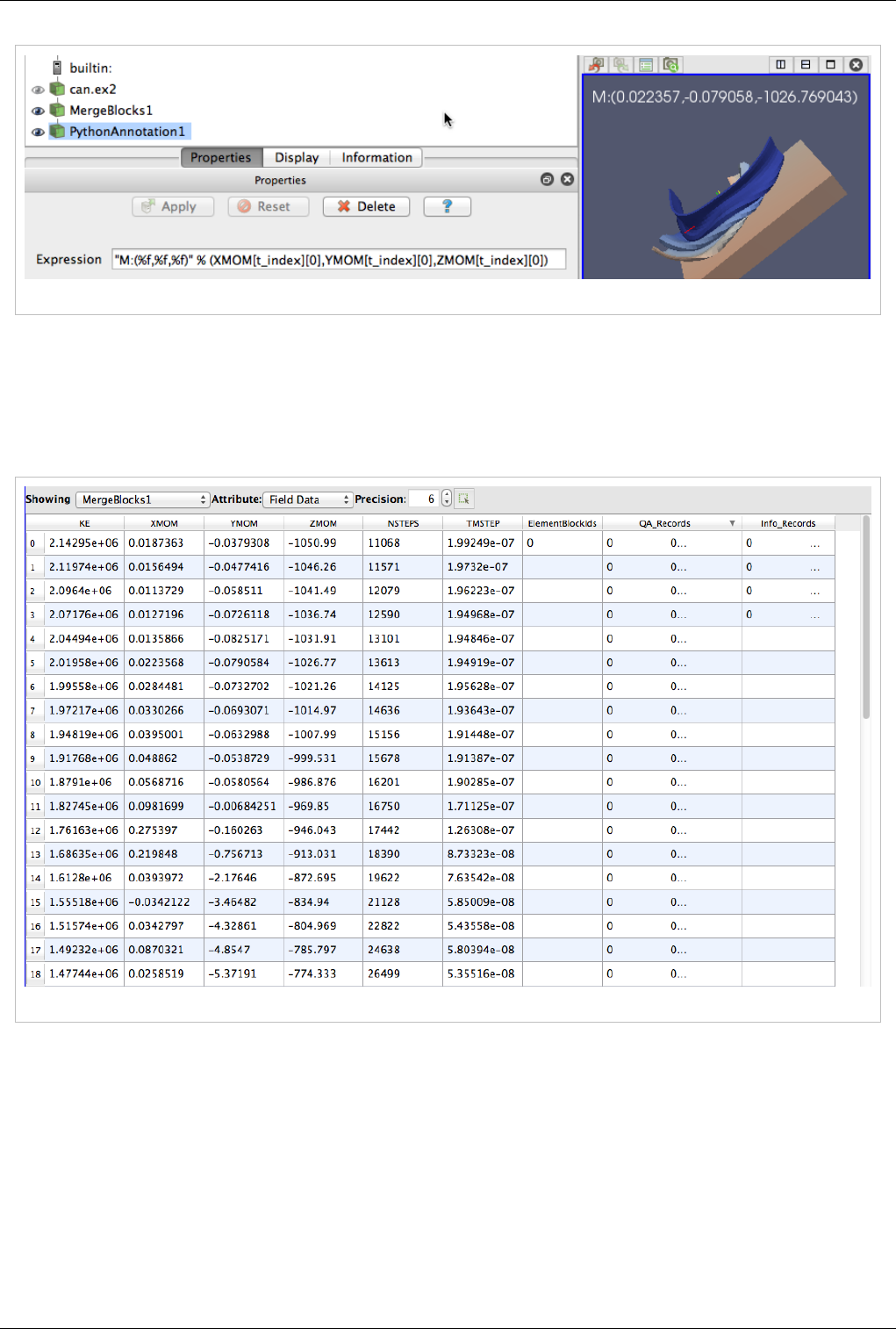

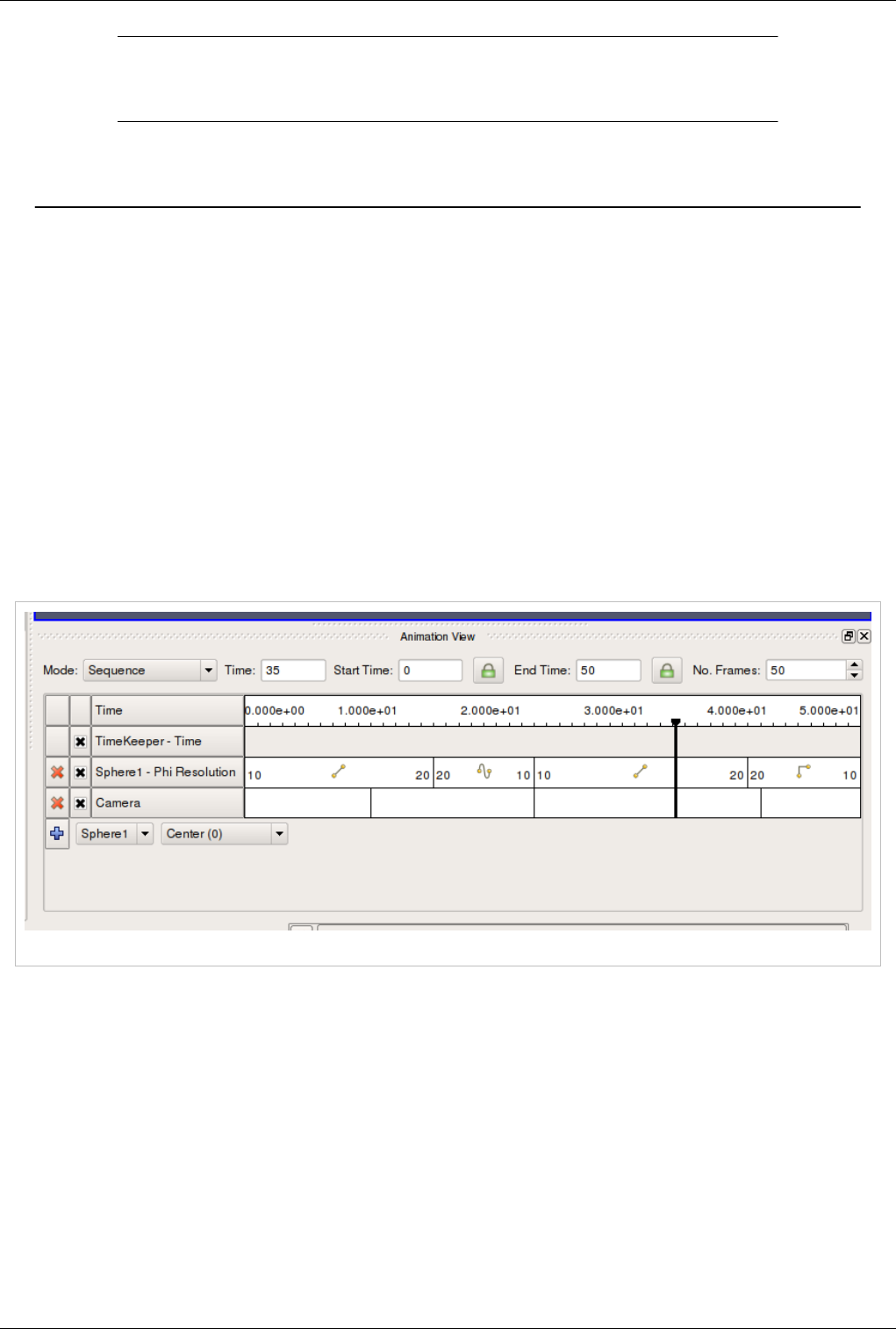

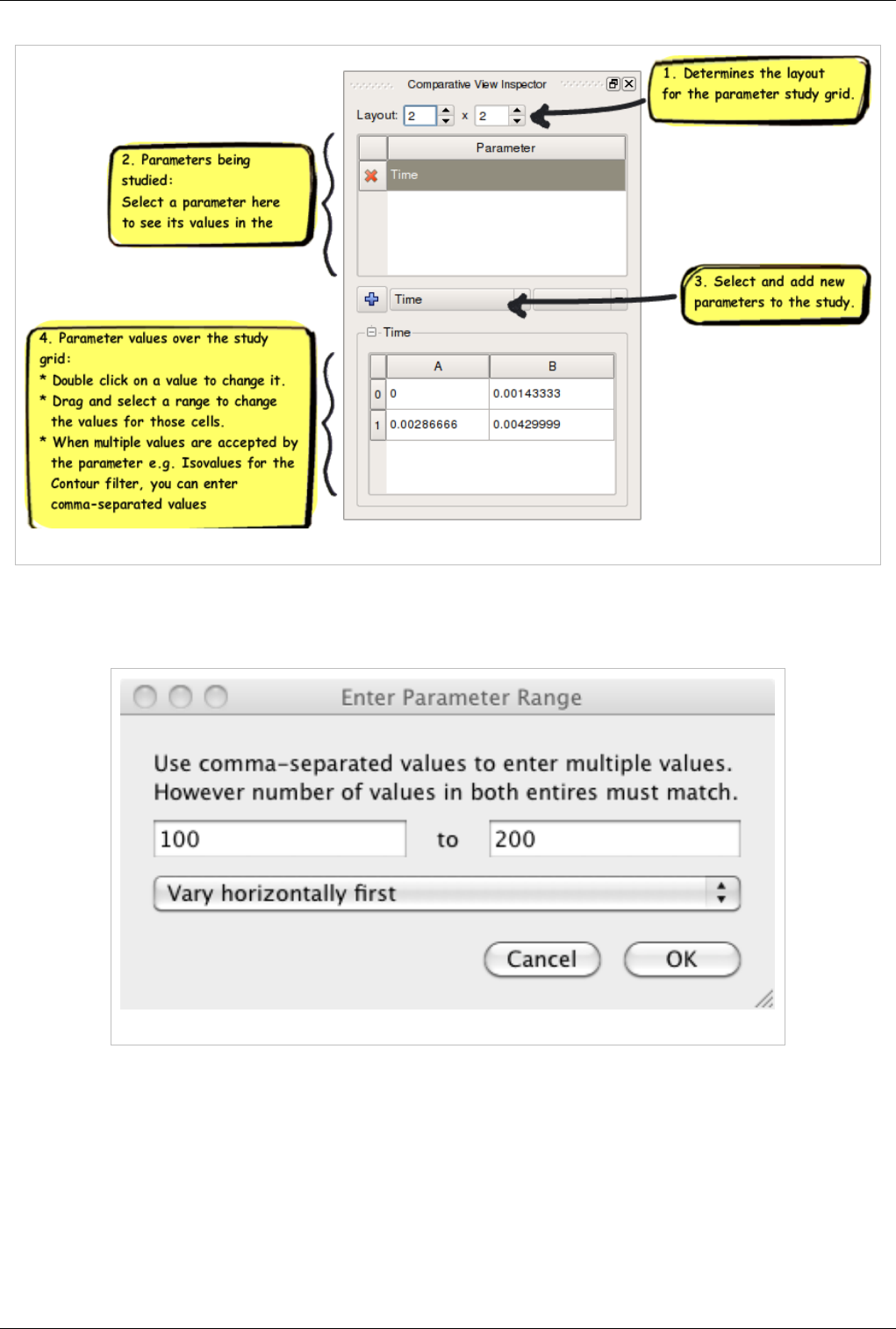

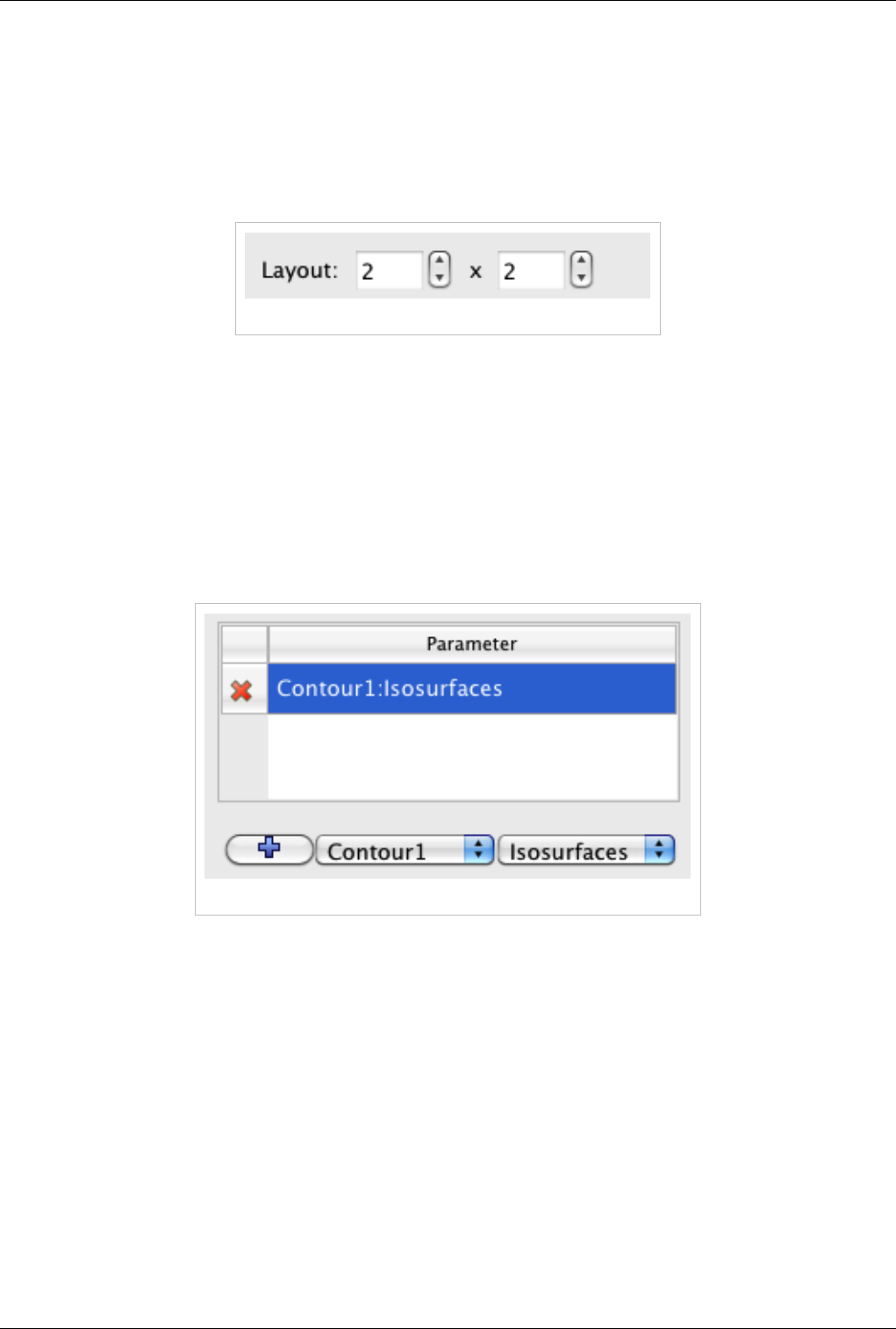

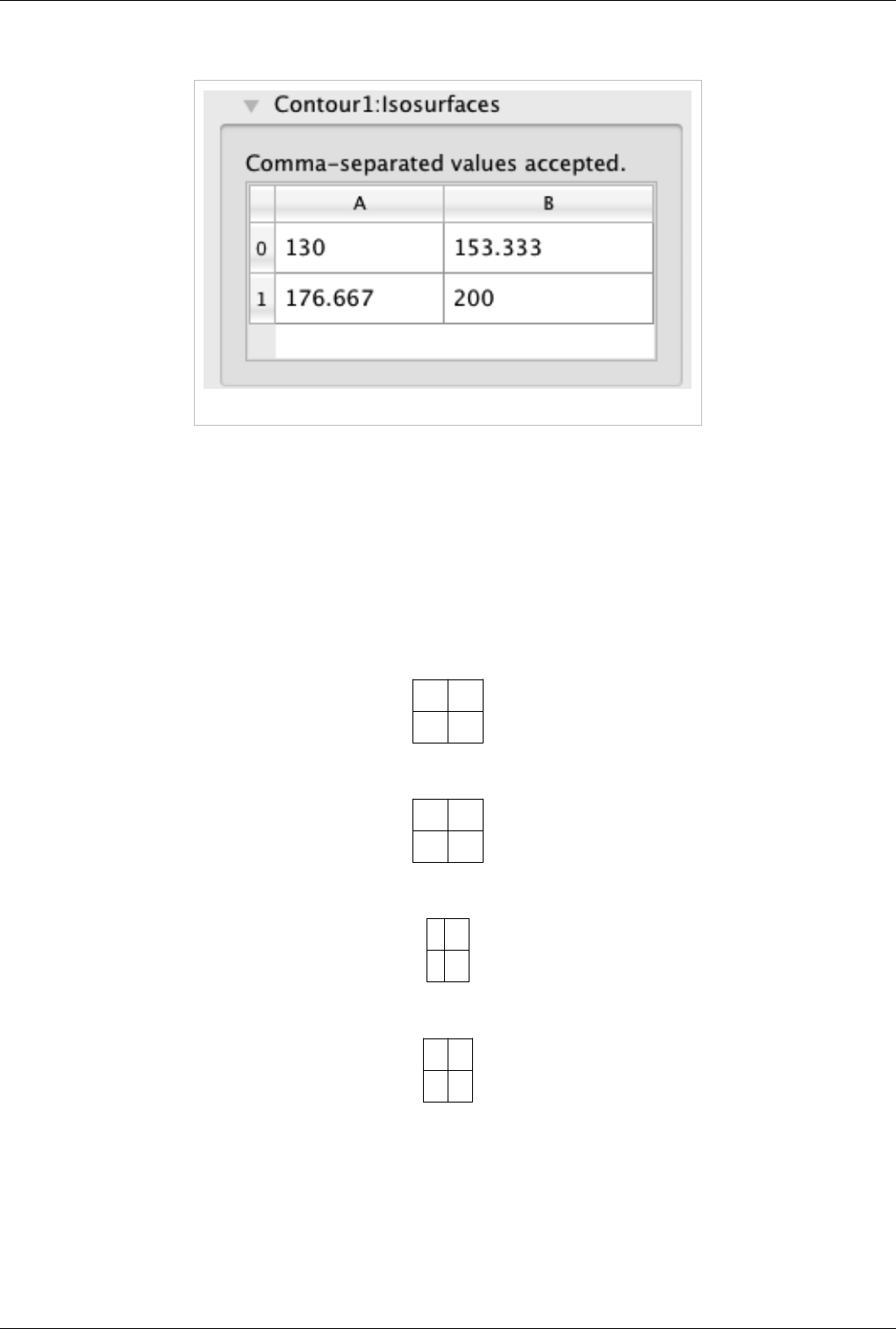

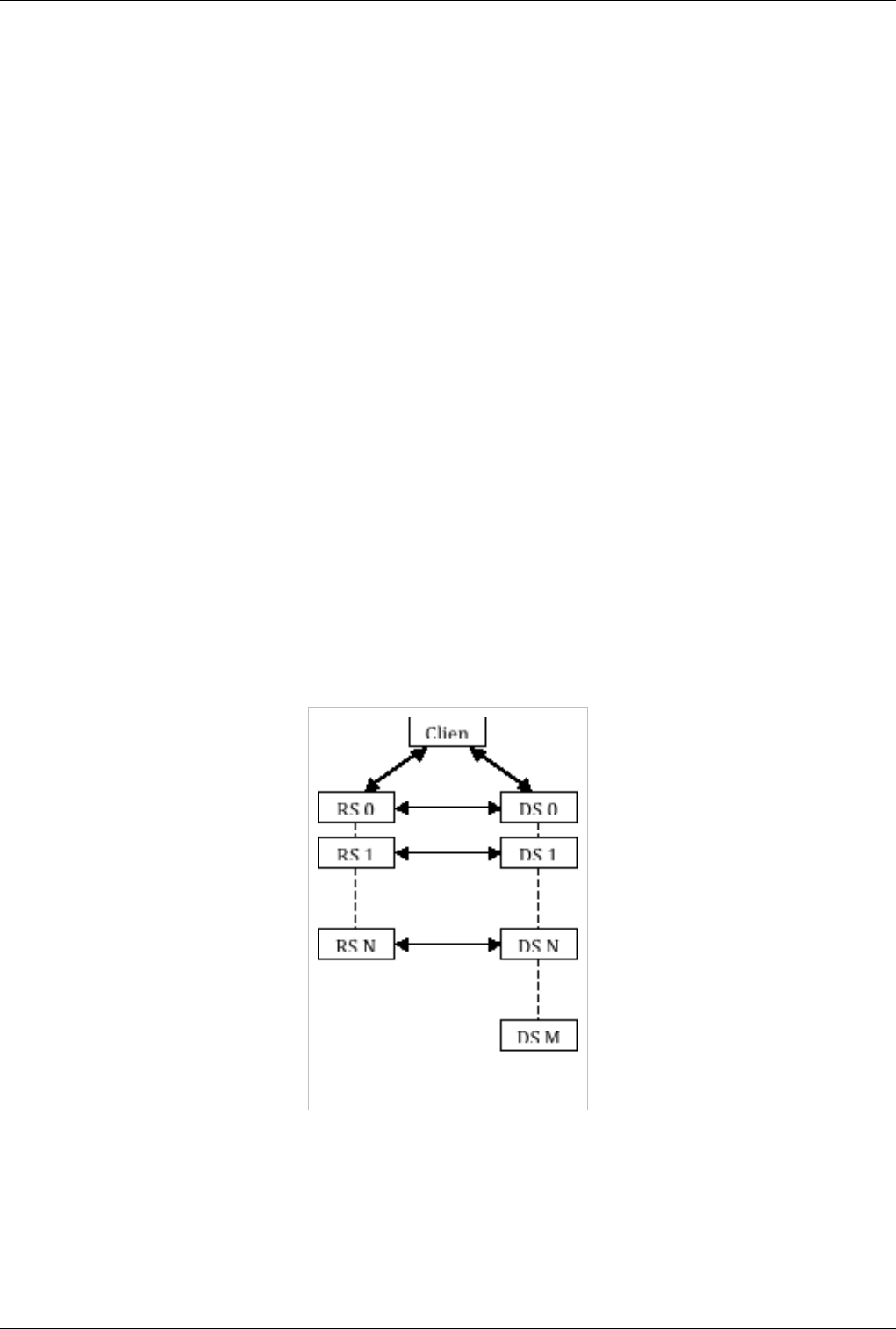

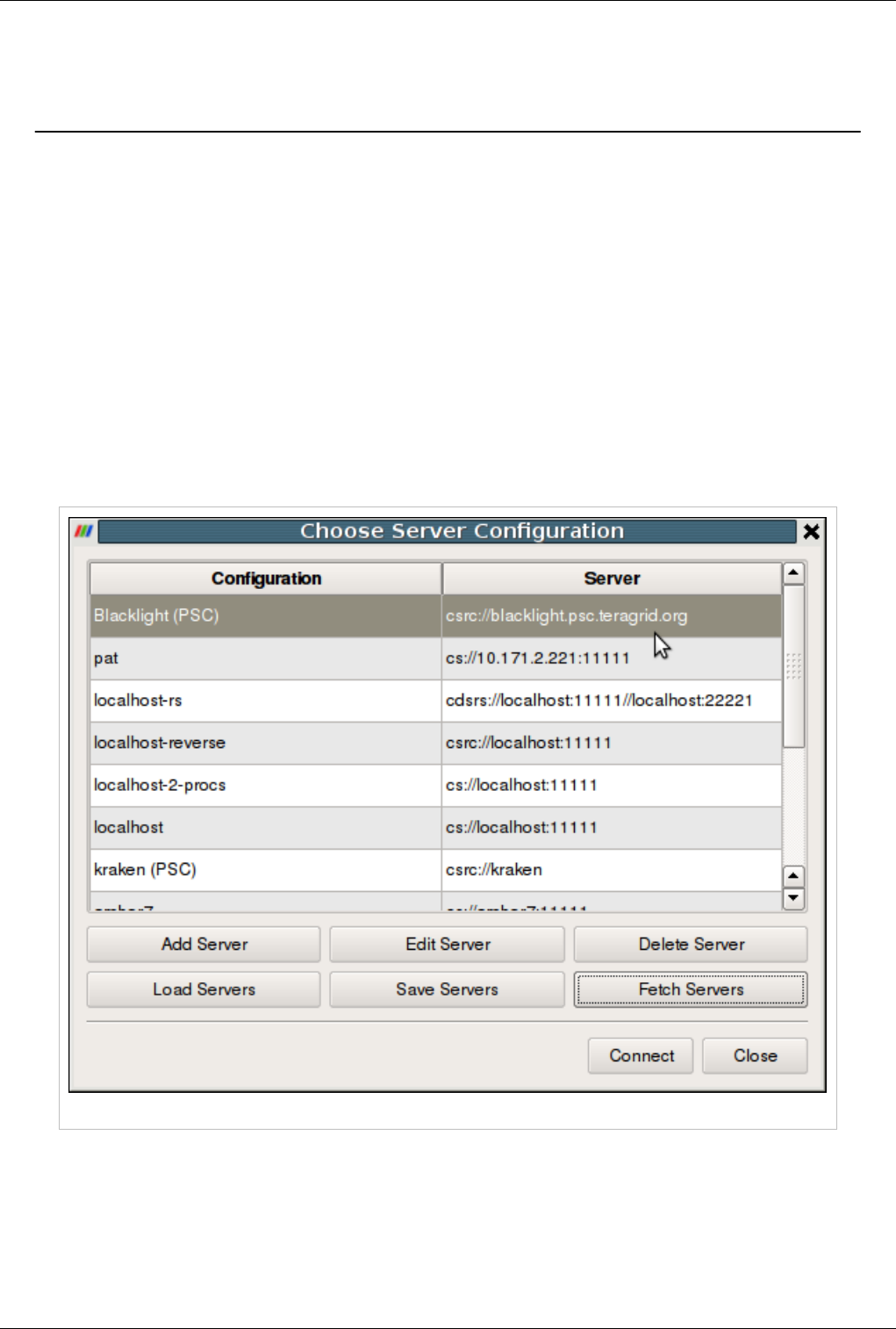

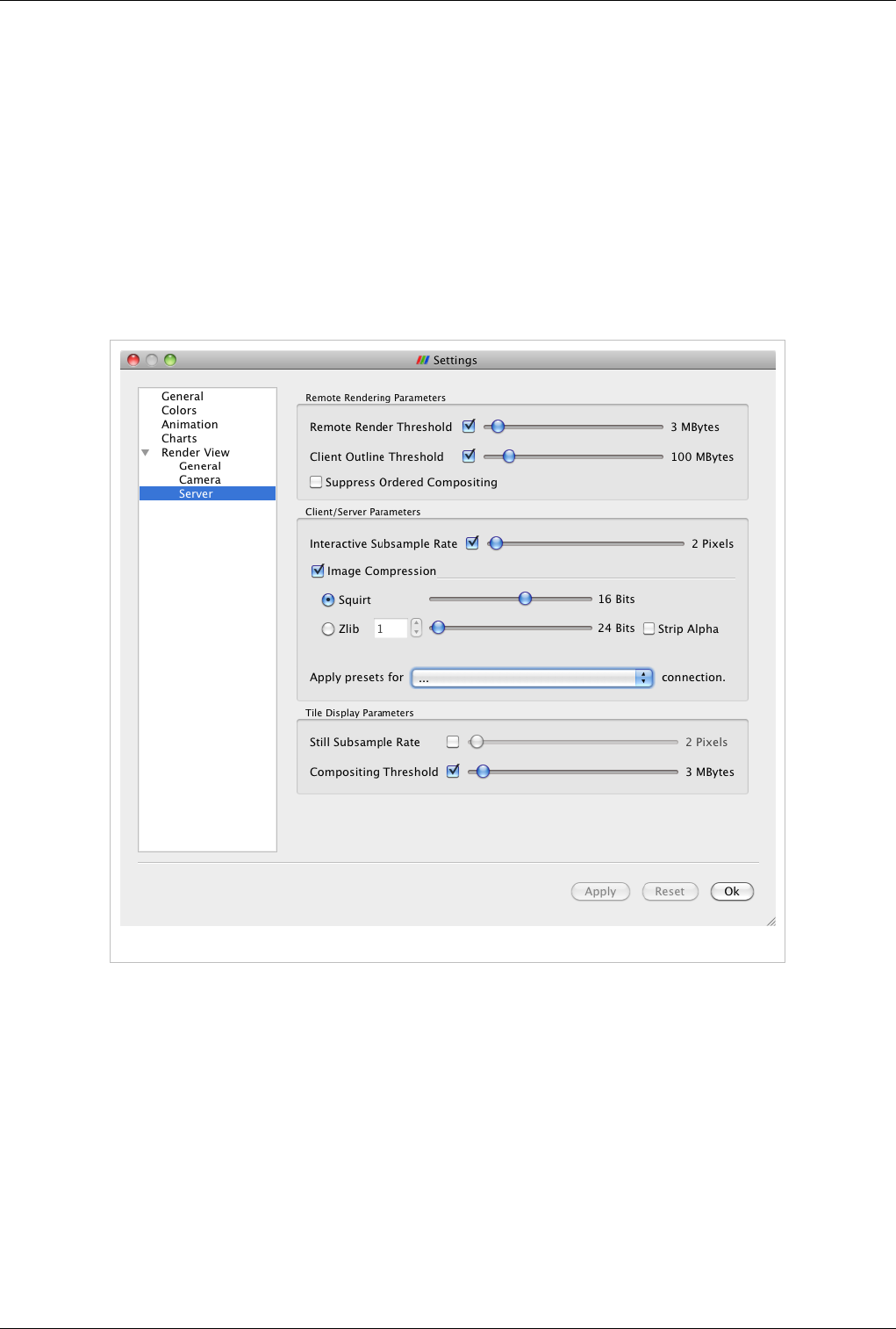

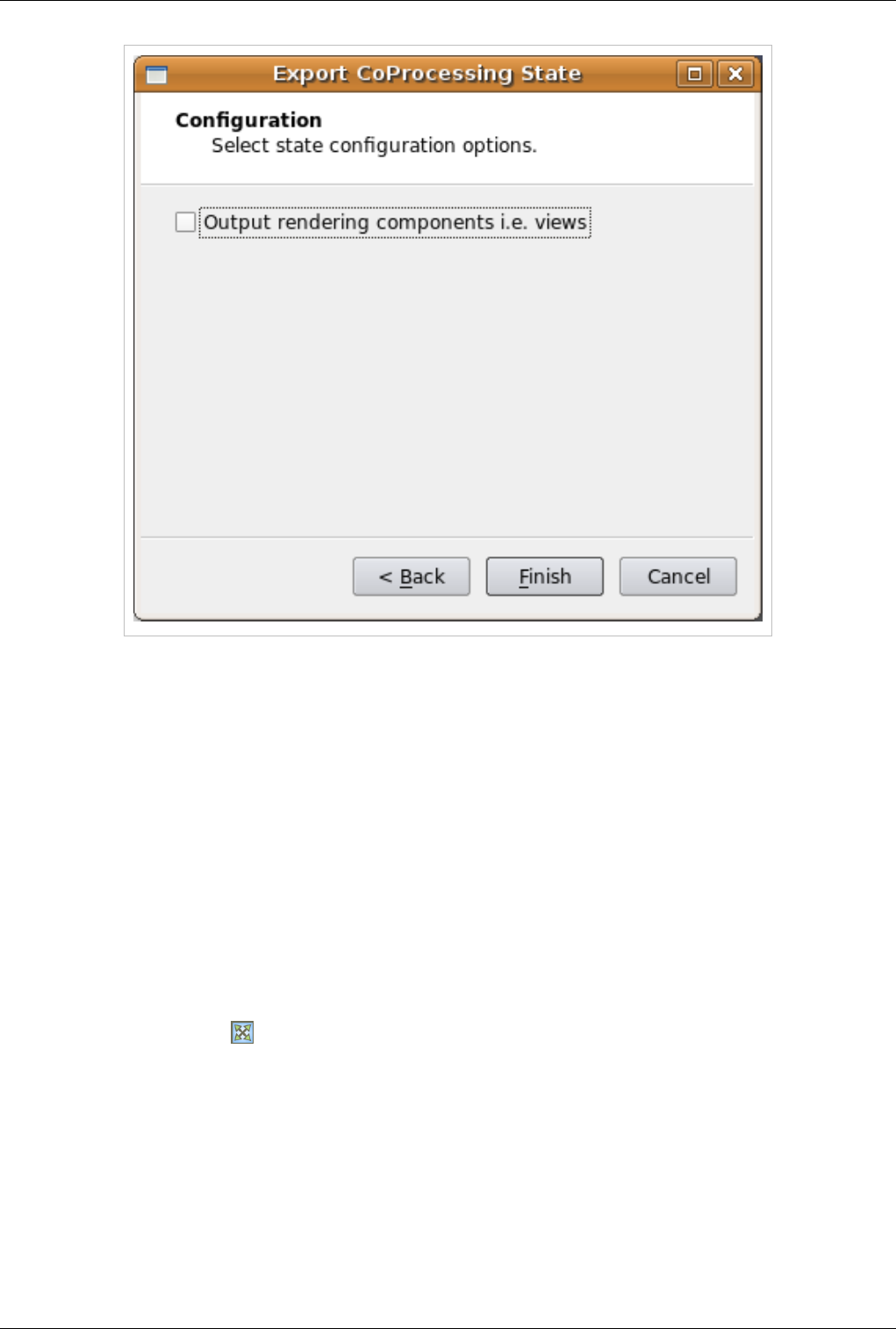

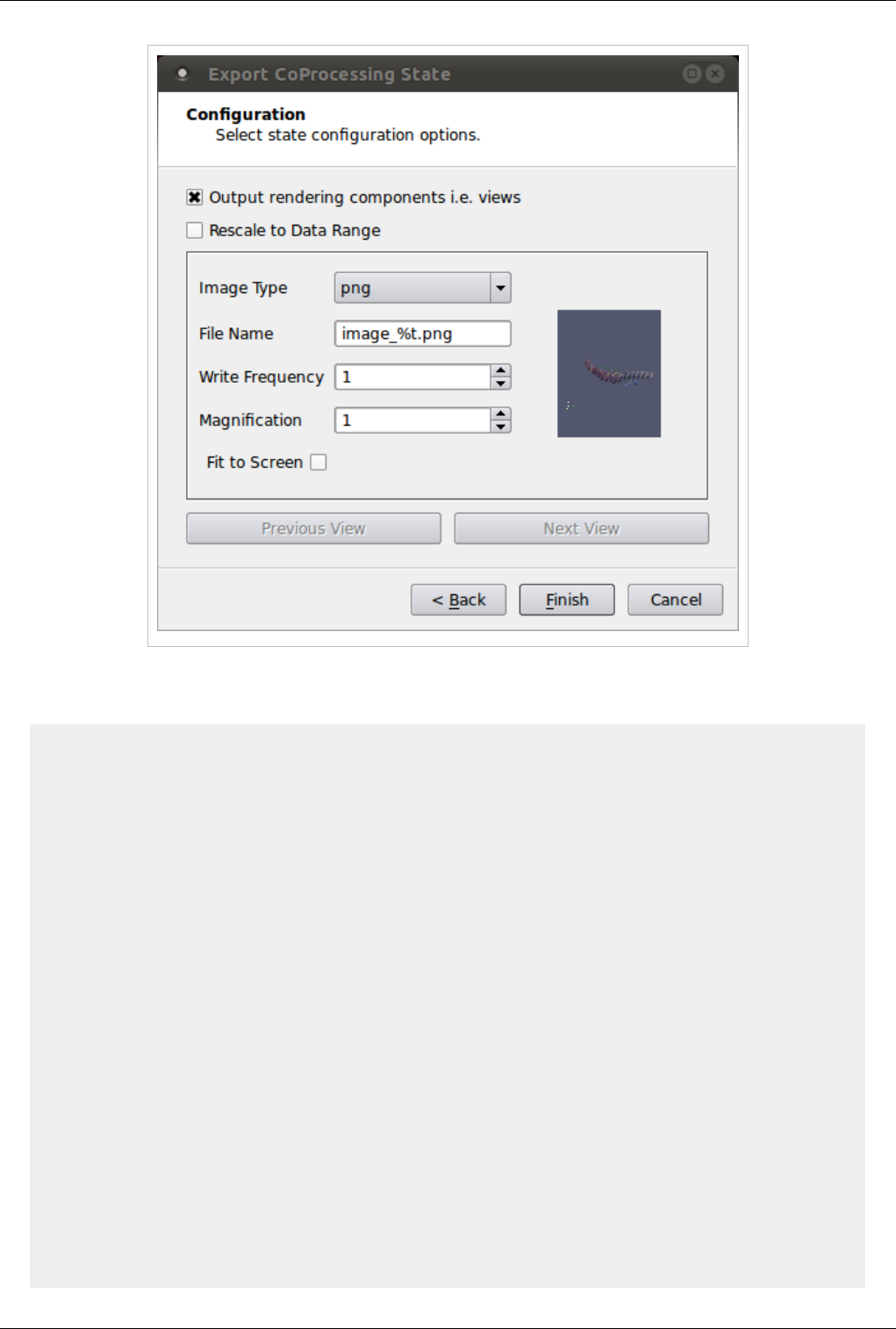

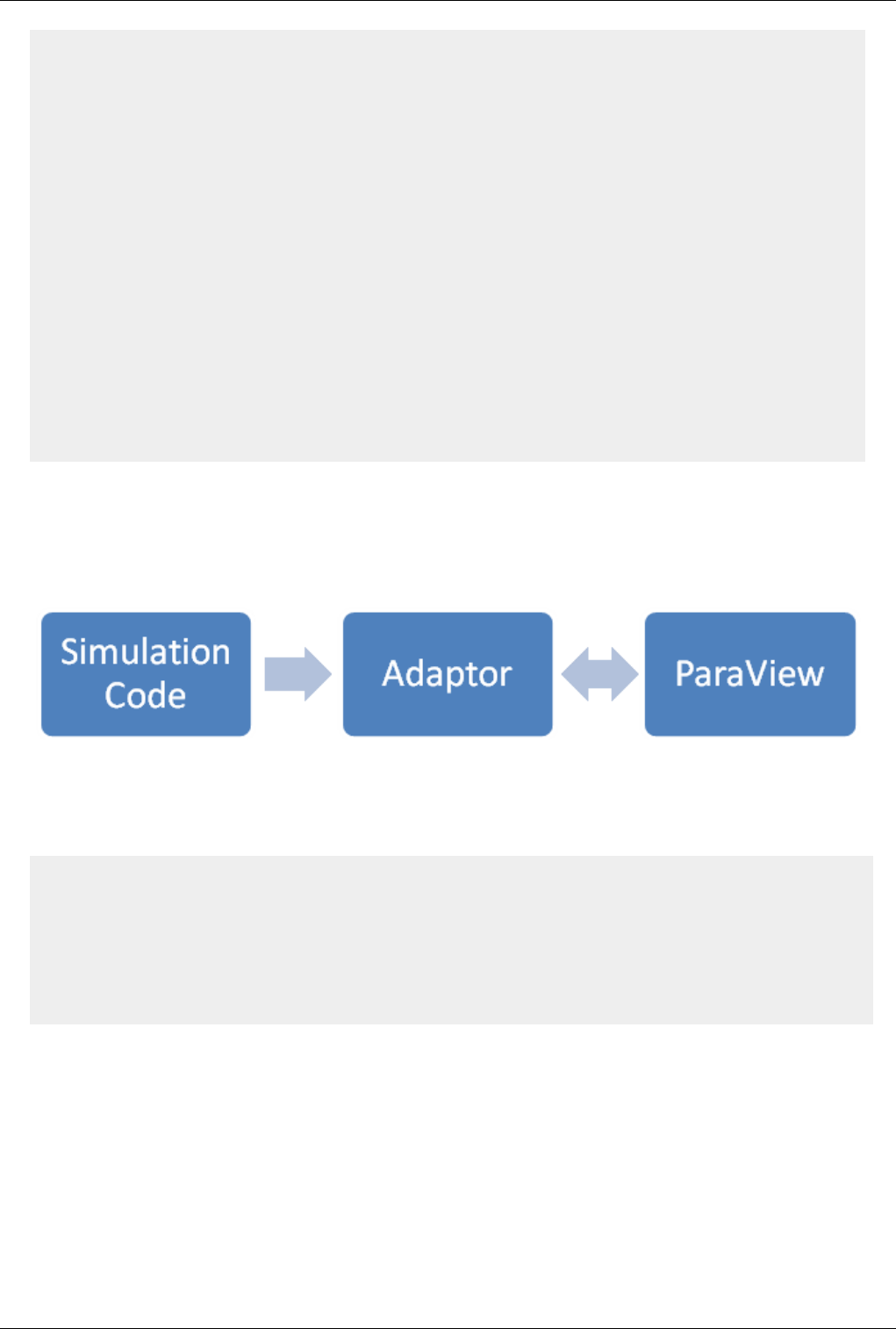

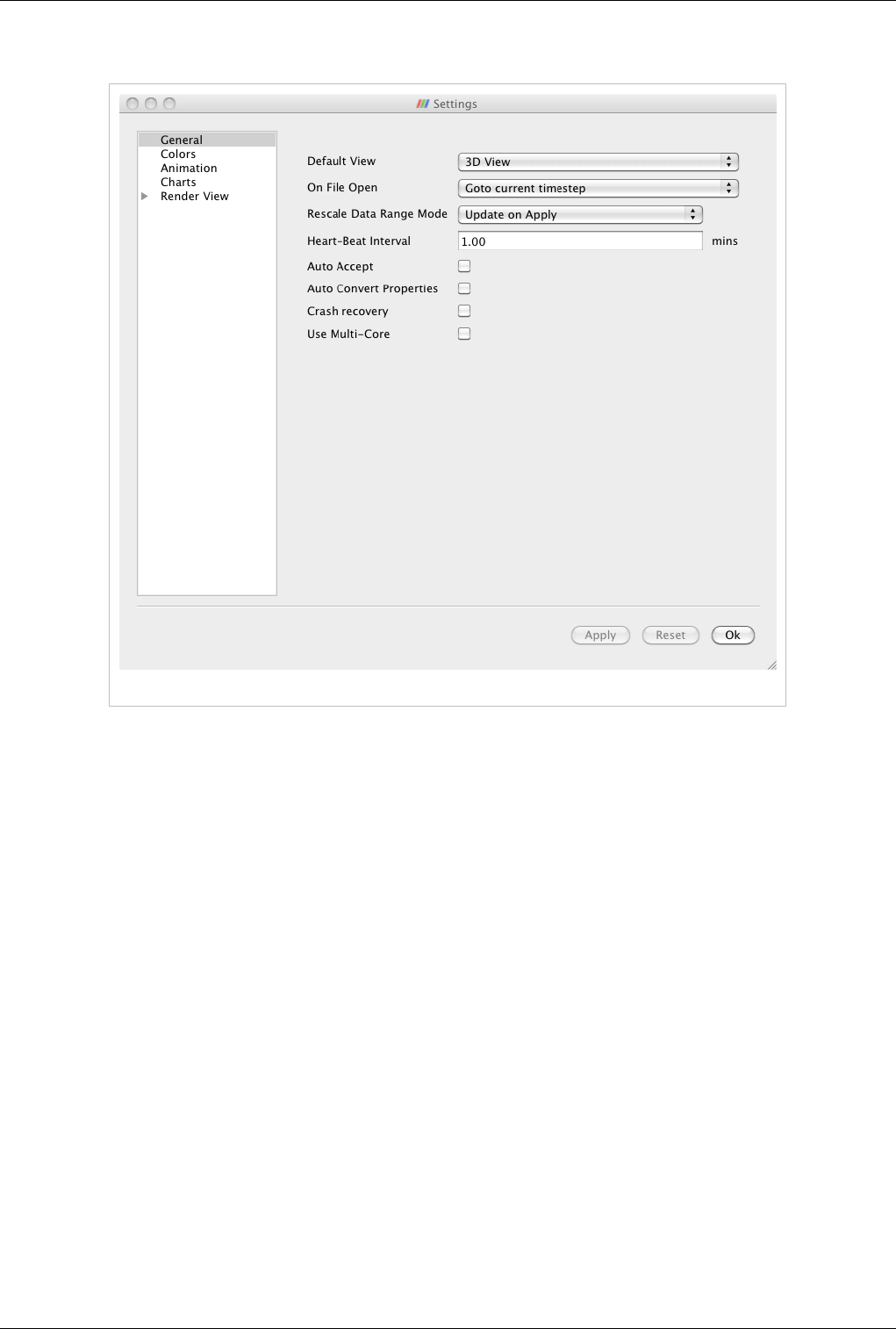

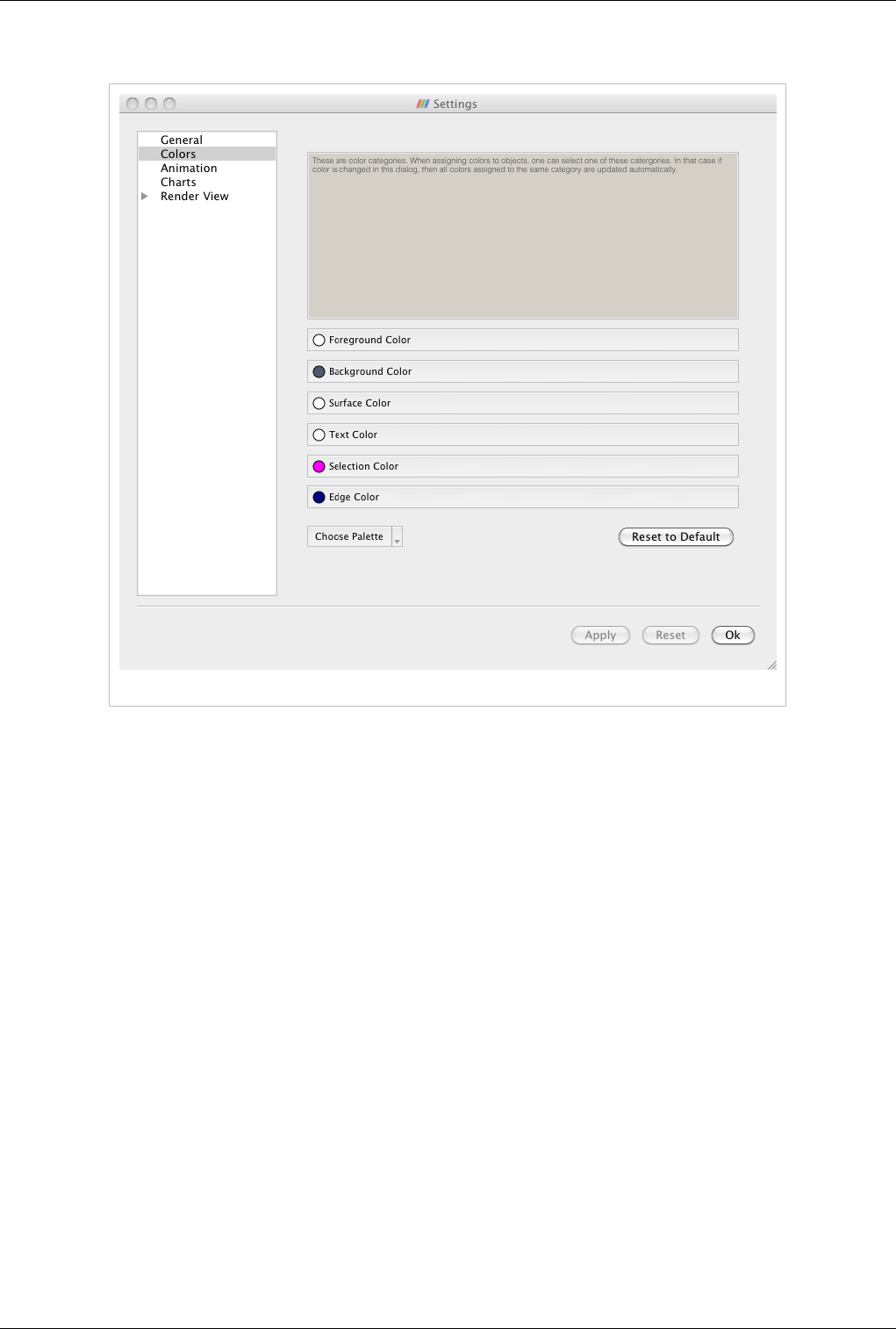

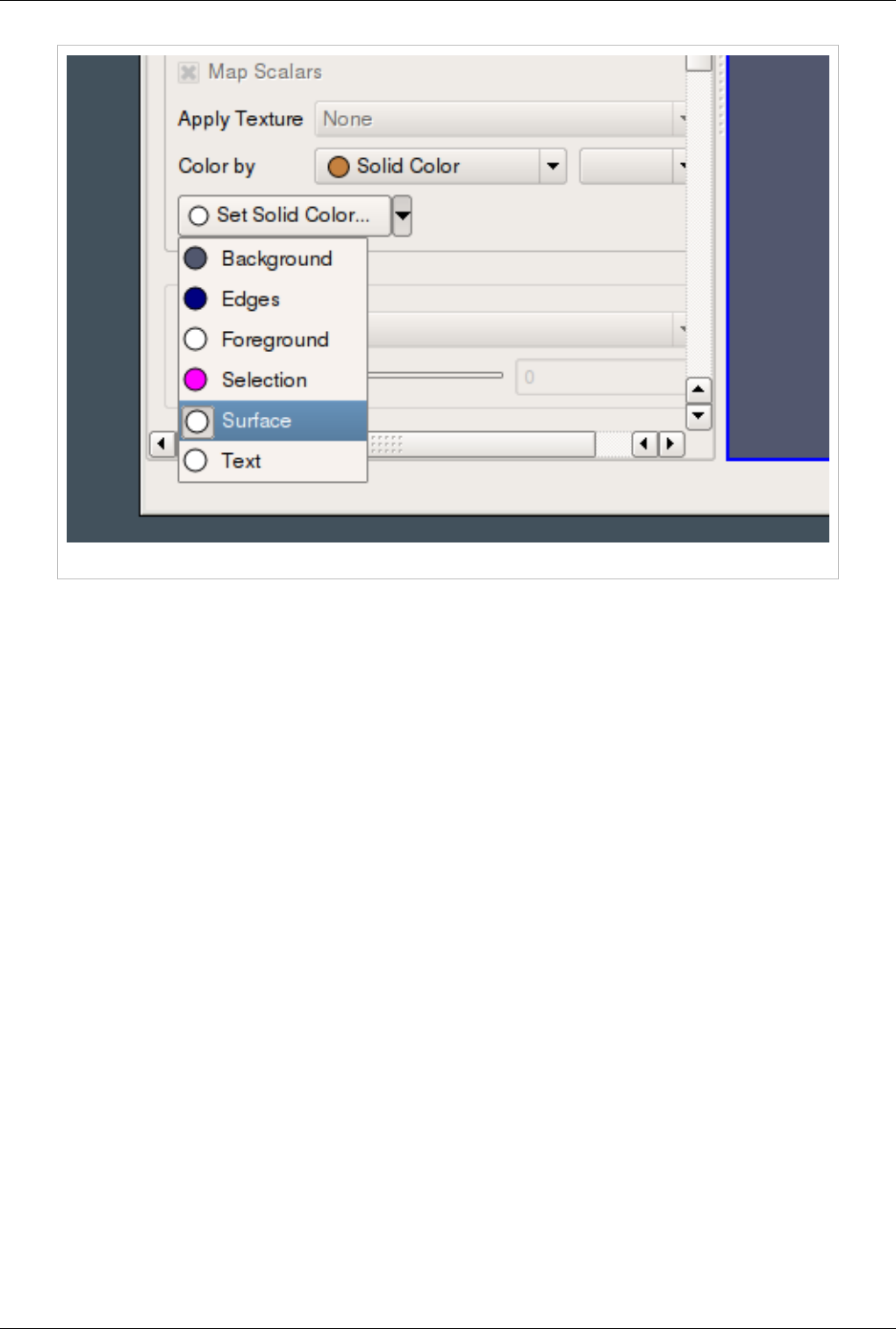

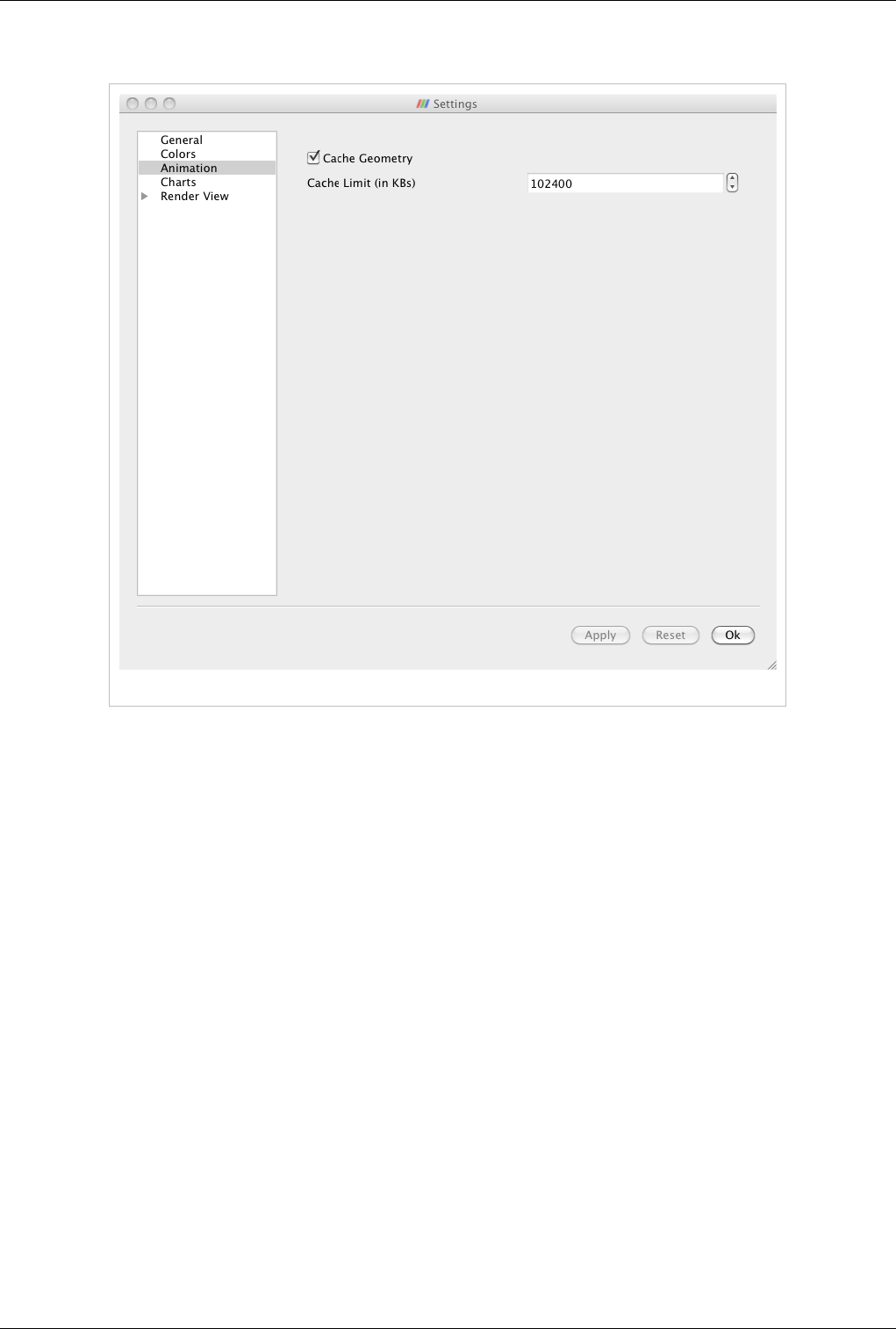

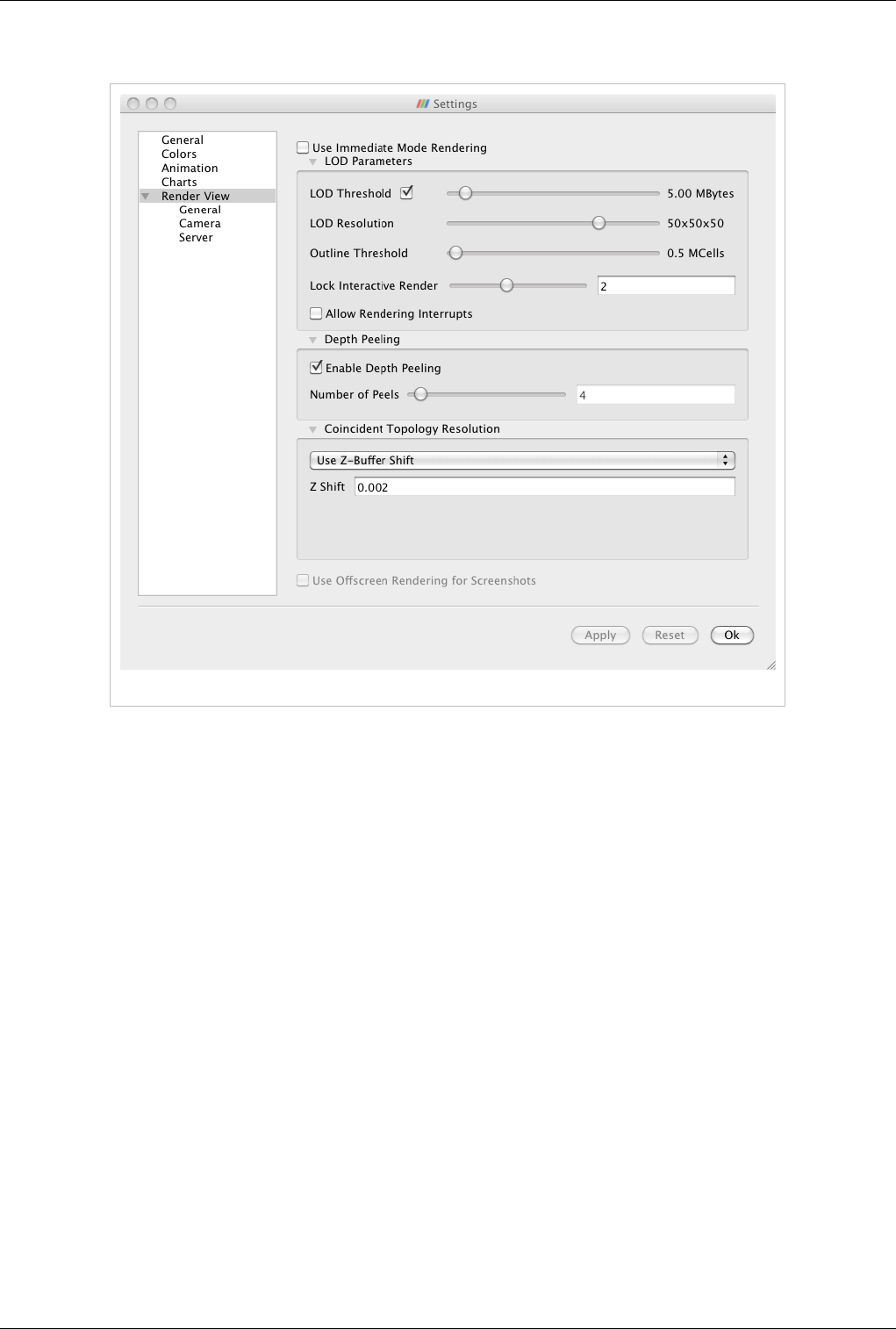

Interaction