Pro T SQL Programmer's Guide (4th Edition) Miguel Cebollero Apress

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 727 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents at a Glance

- Contents

- About the Authors

- About the Technical Reviewer

- Acknowledgments

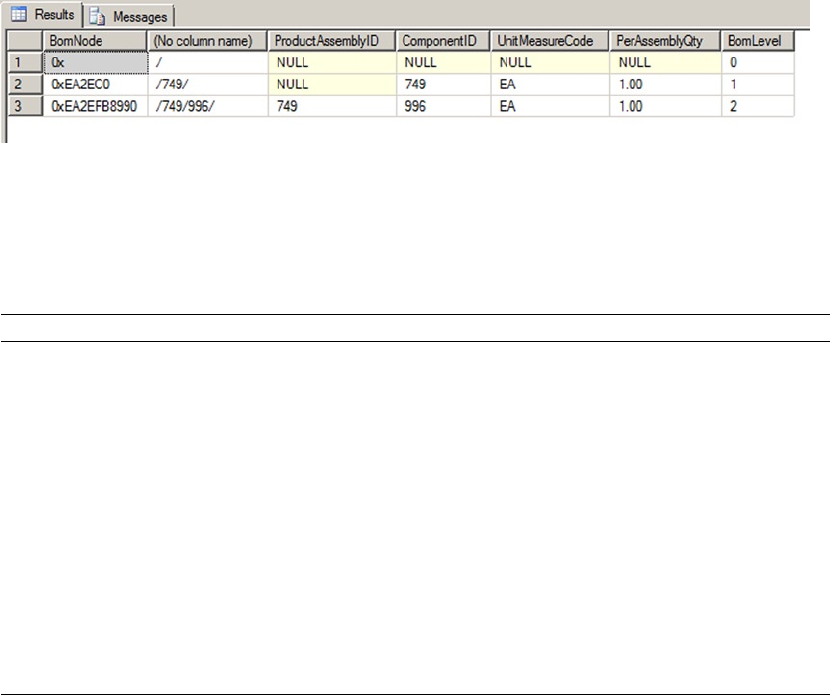

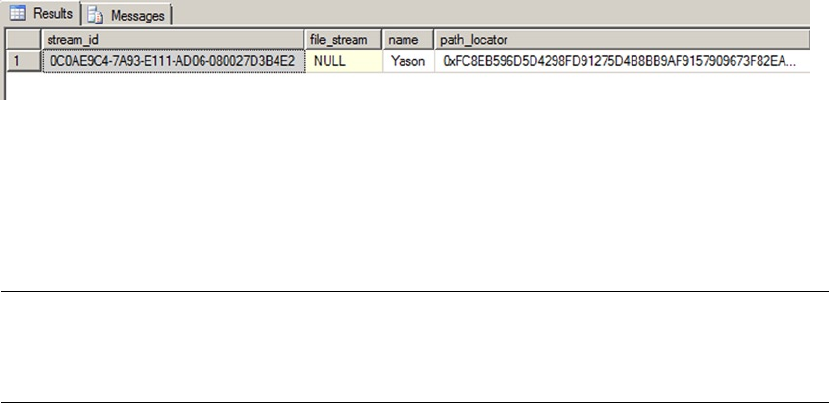

- Introduction

- Chapter 1: Foundations of T-SQL

- Chapter 2: Tools of the Trade

- Chapter 3: Procedural Code

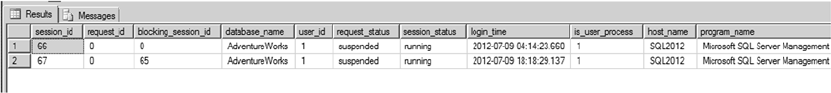

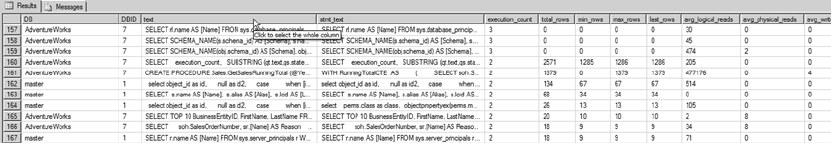

- Chapter 4: User-Defined Functions

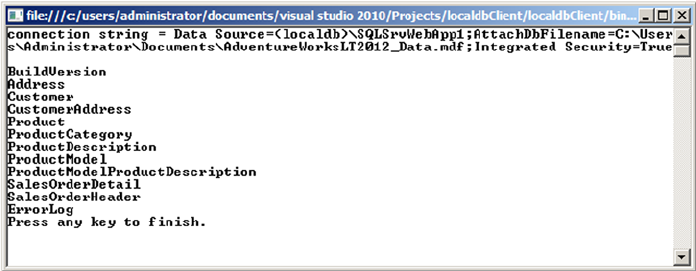

- Chapter 5: Stored Procedures

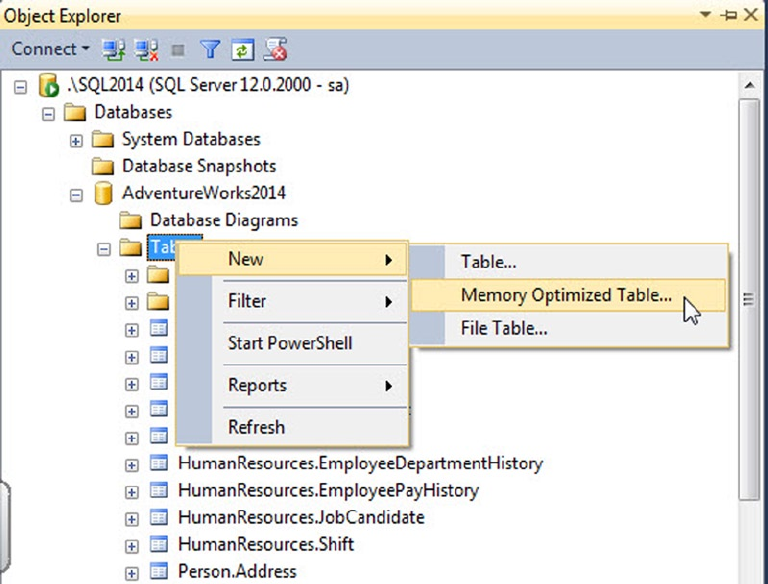

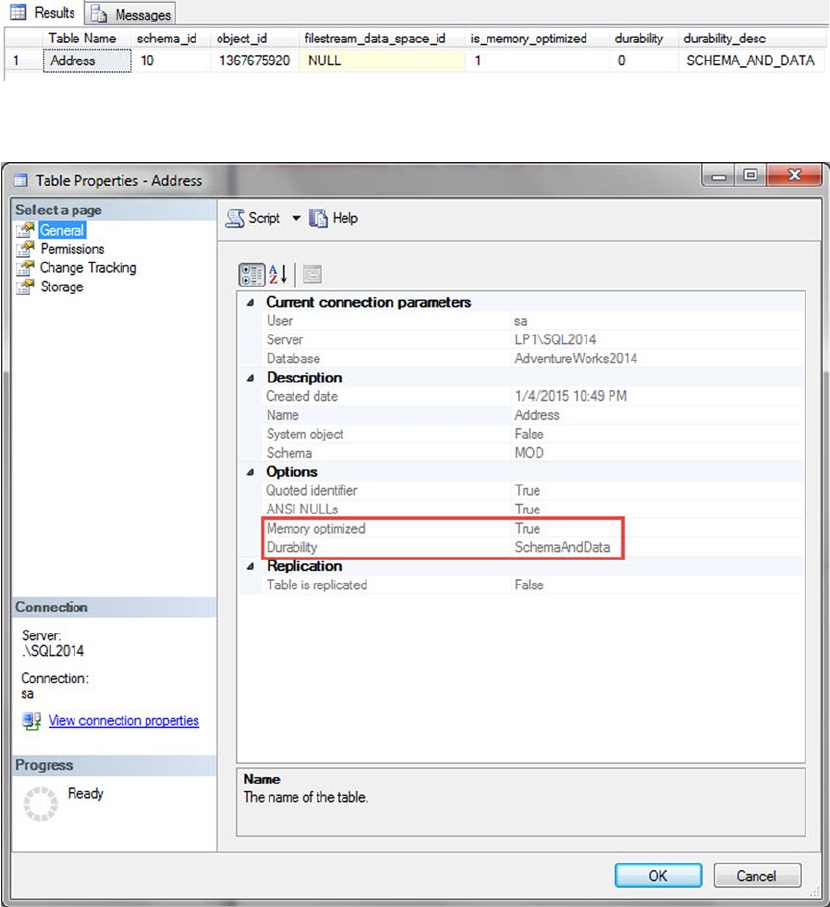

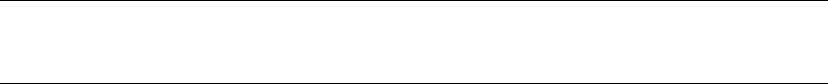

- Chapter 6: In-Memory Programming

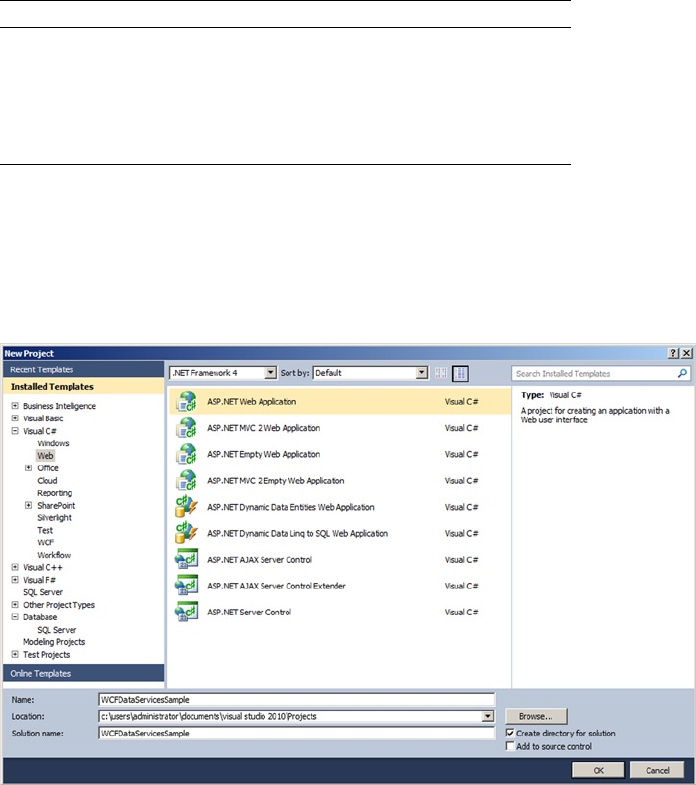

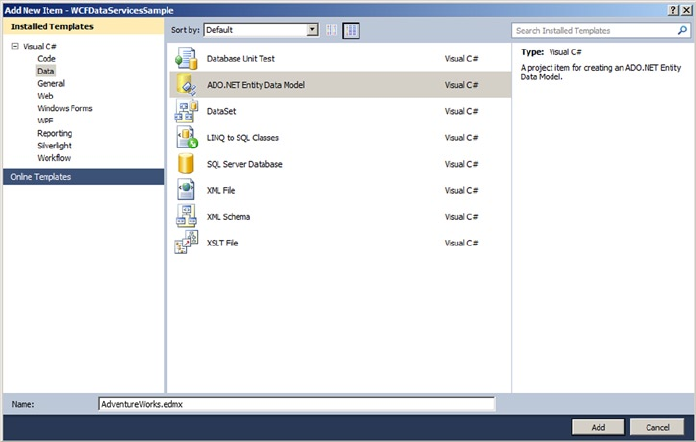

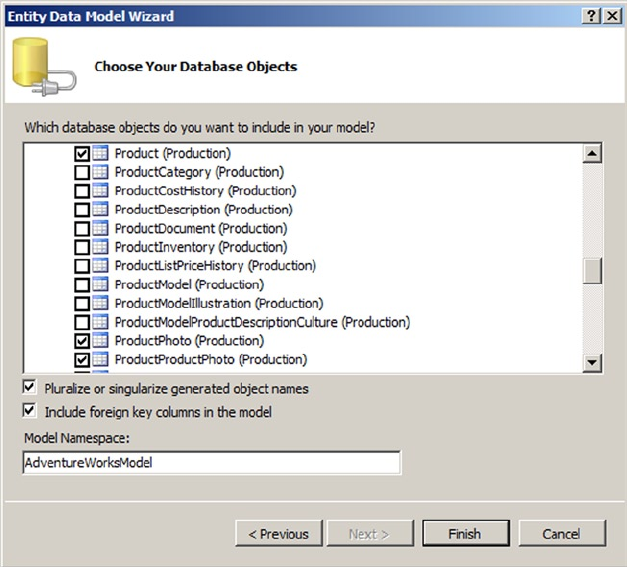

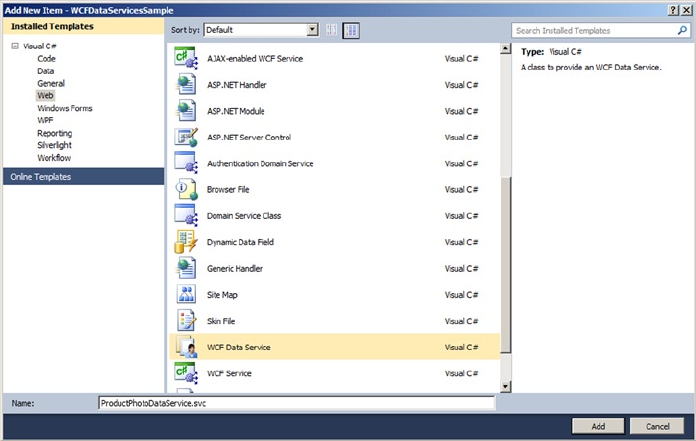

- Chapter 7: Triggers

- Chapter 8: Encryption

- Chapter 9: Common Table Expressions and Windowing Functions

- Chapter 10: Data Types and Advanced Data Types

- Chapter 11: Full-Text Search

- FTS Architecture

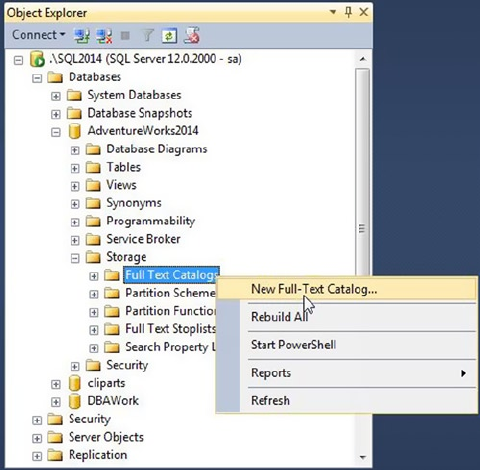

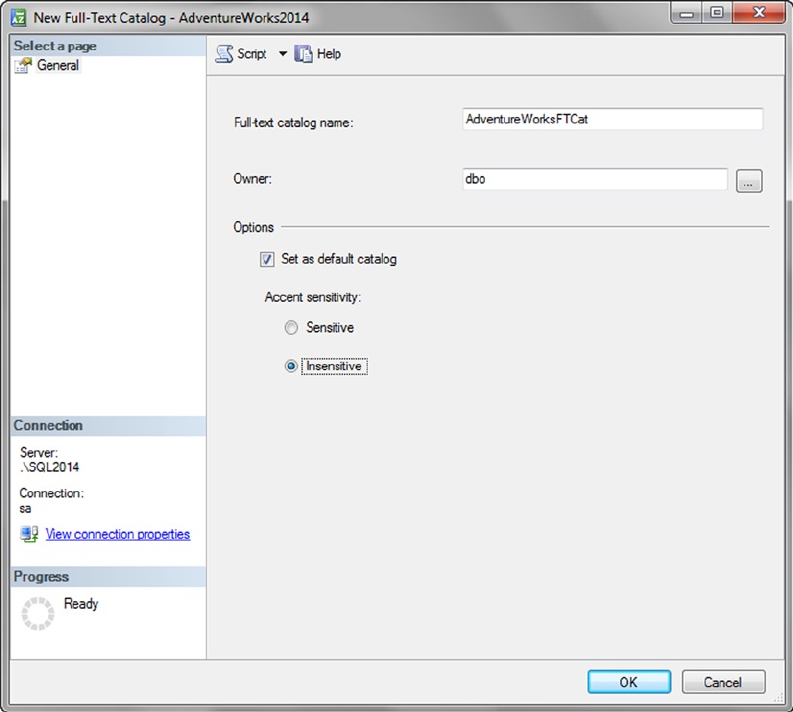

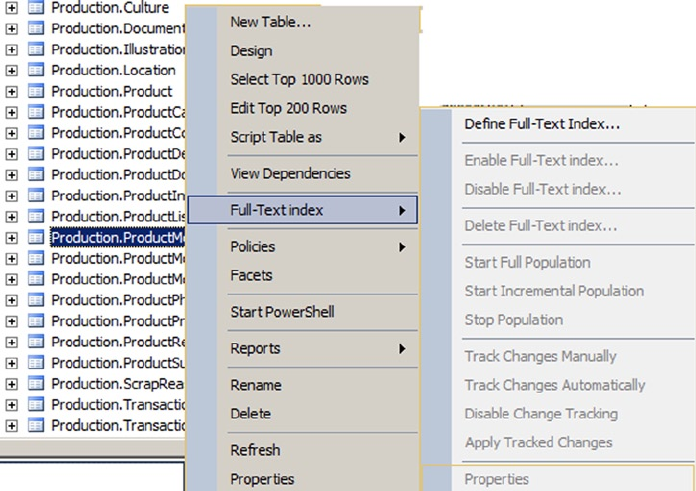

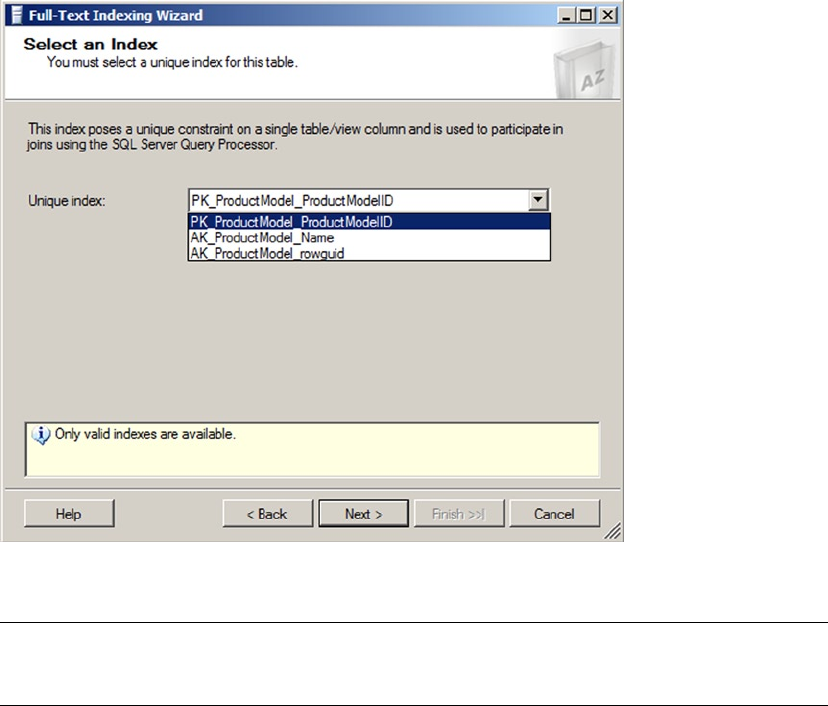

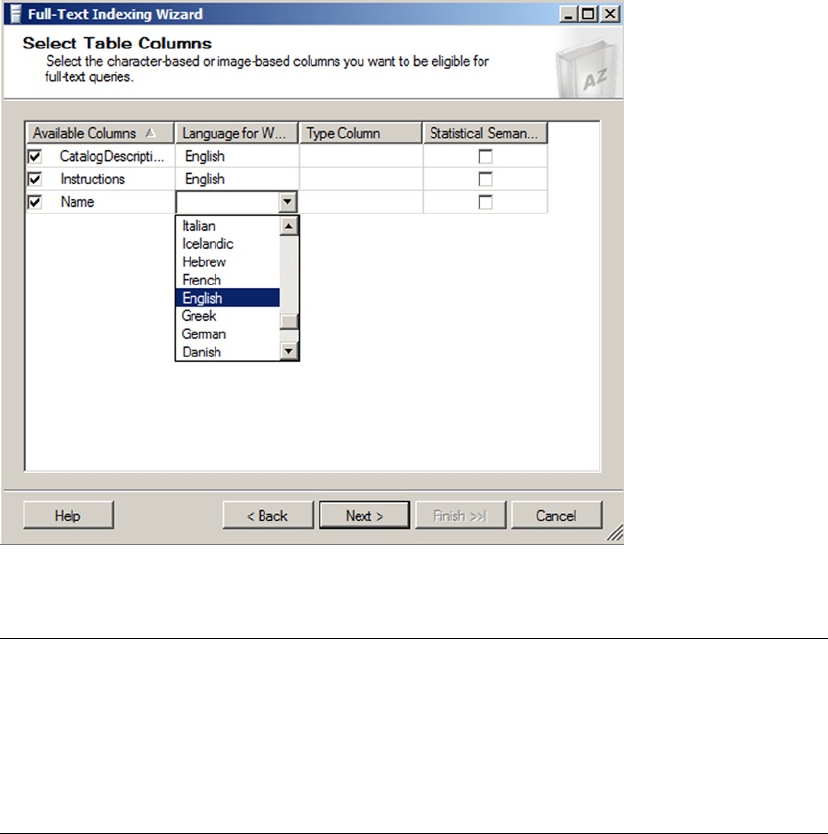

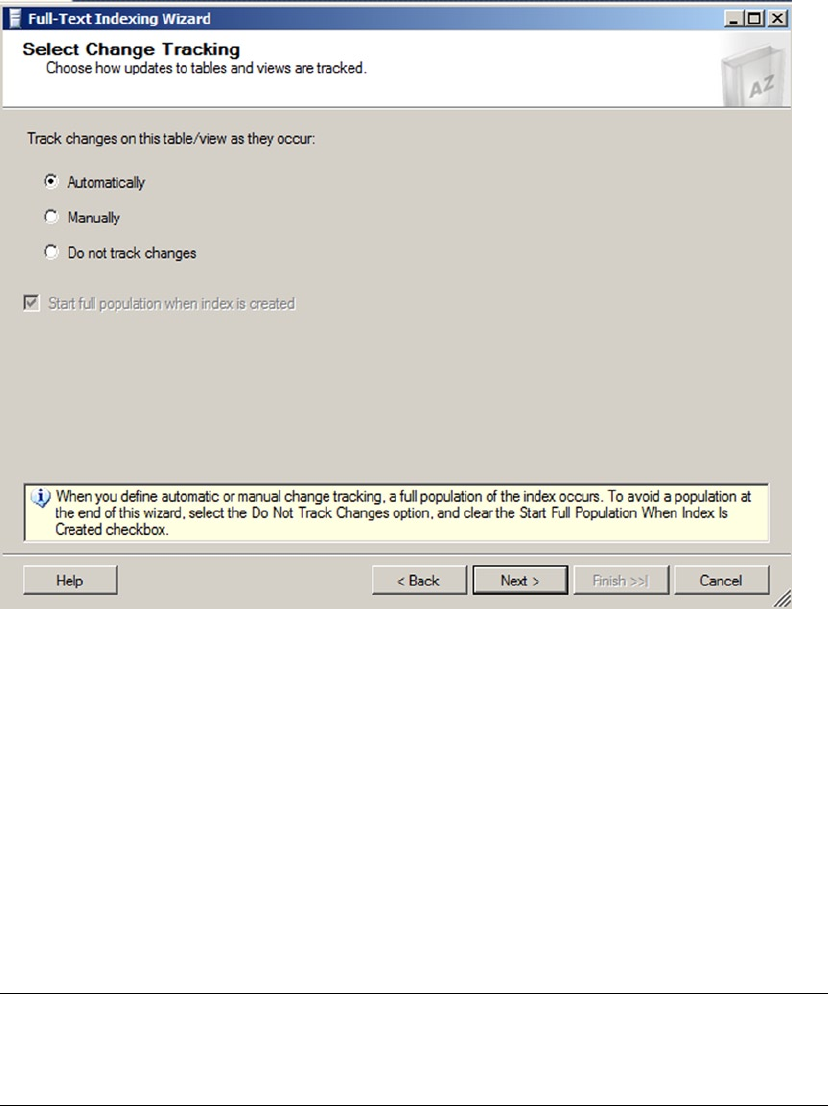

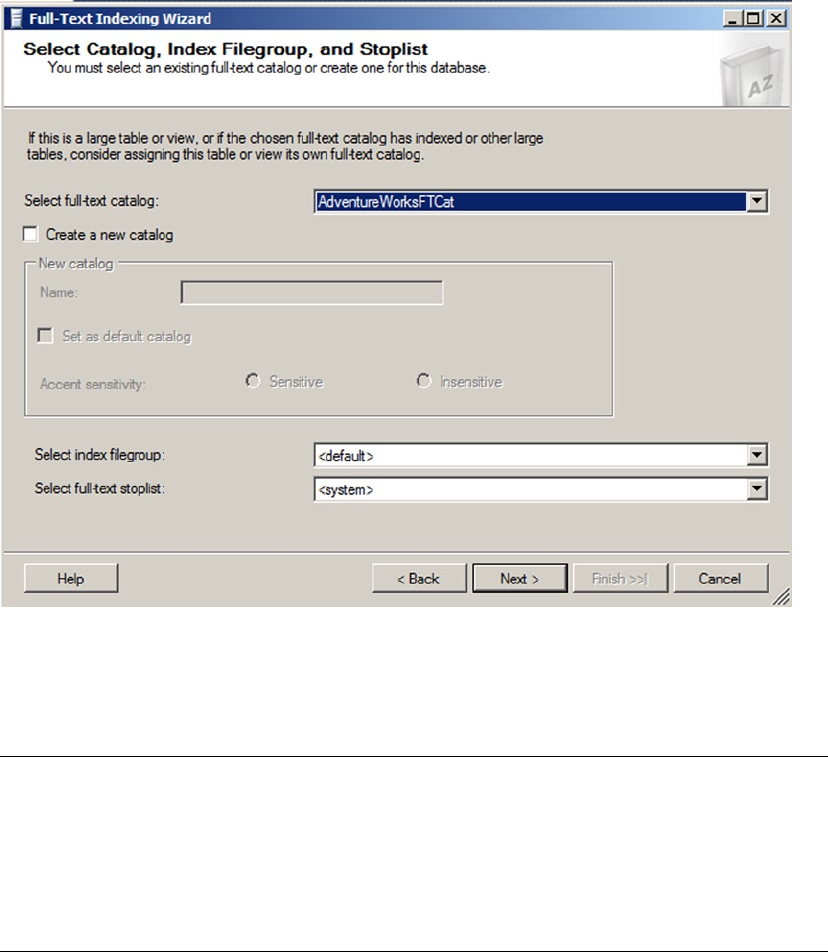

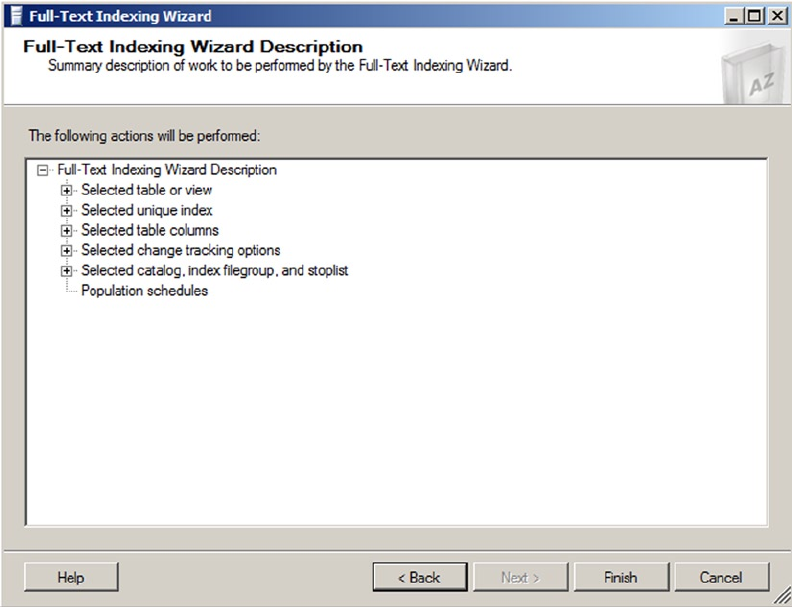

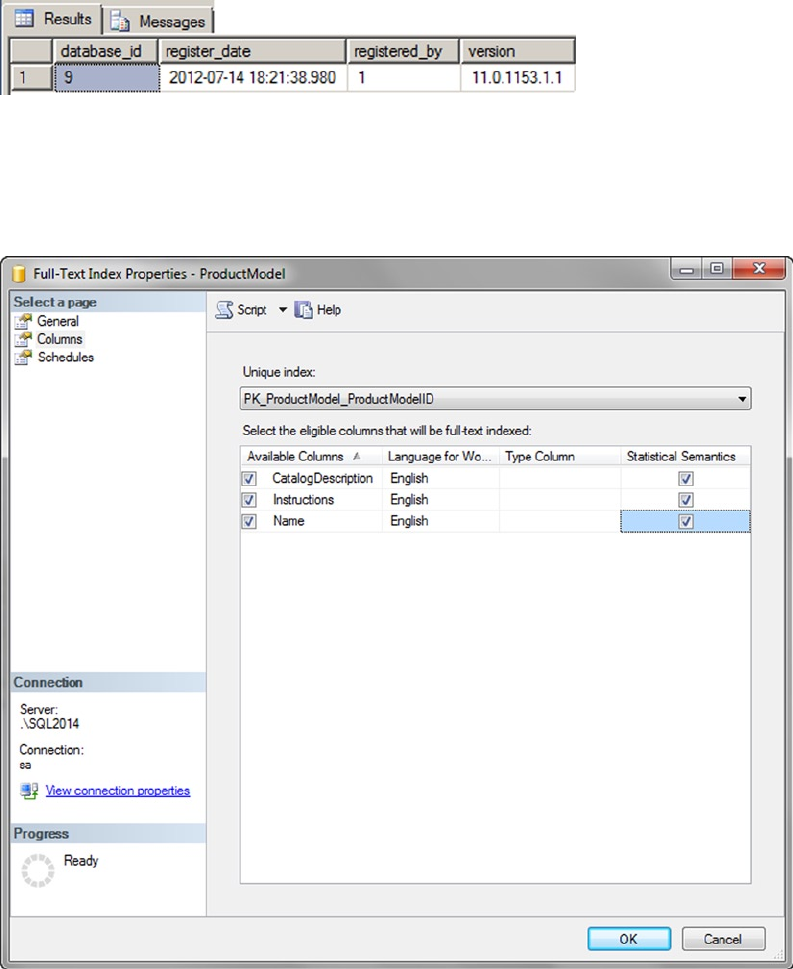

- Creating Full-Text Catalogs and Indexes

- Creating Full-Text Catalogs

- Creating Full-Text Indexes

- Full-Text Querying

- The FREETEXT Predicate

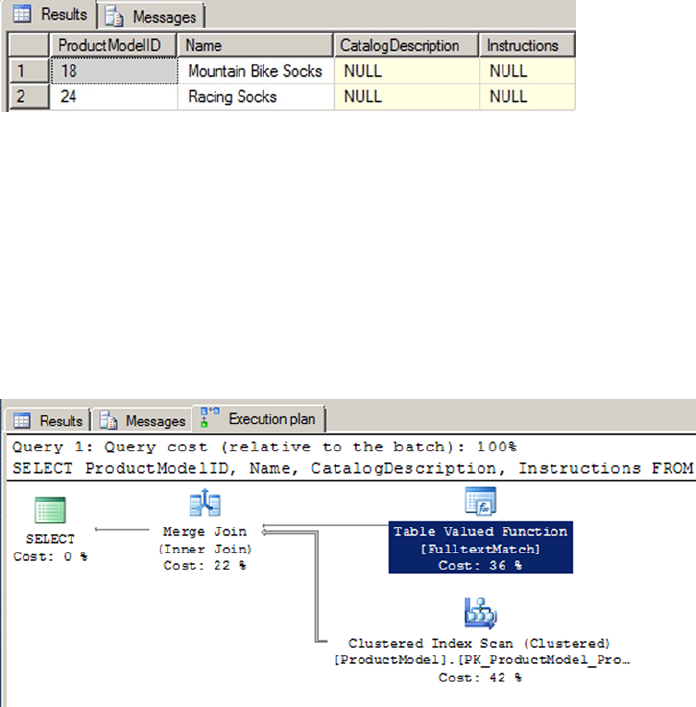

- FTS Performance Optimization

- The CONTAINS Predicate

- The FREETEXTTABLE and CONTAINSTABLE Functions

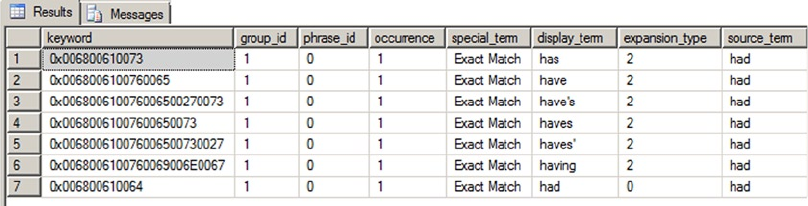

- Thesauruses and Stoplists

- Stored Procedures and Dynamic Management Views and Functions

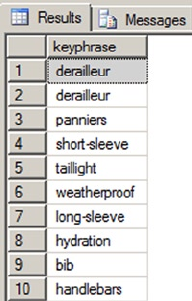

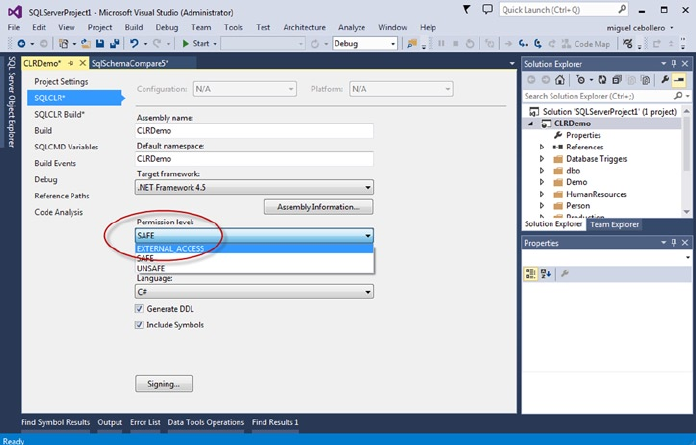

- Statistical Semantics

- Summary

- FTS Architecture

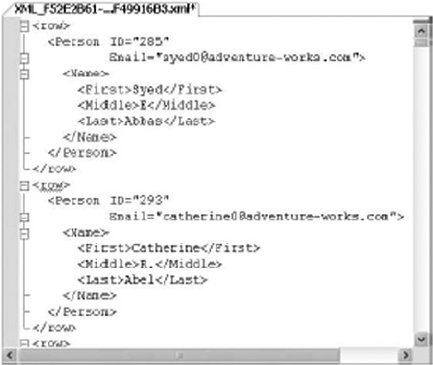

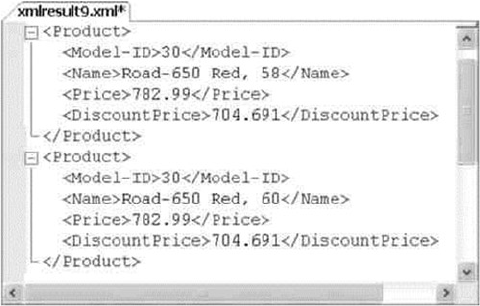

- Chapter 12: XML

- Legacy XML

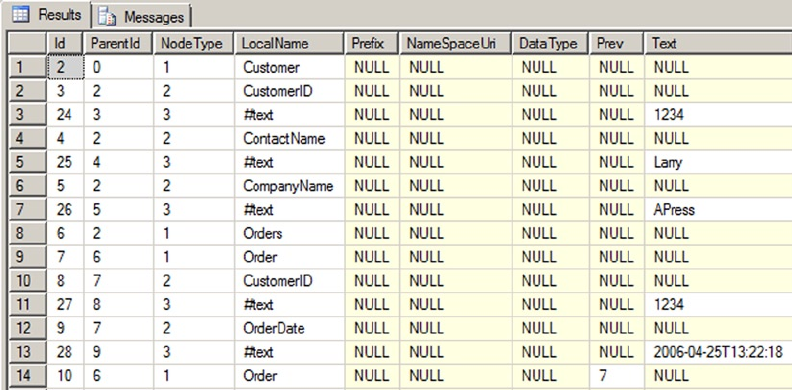

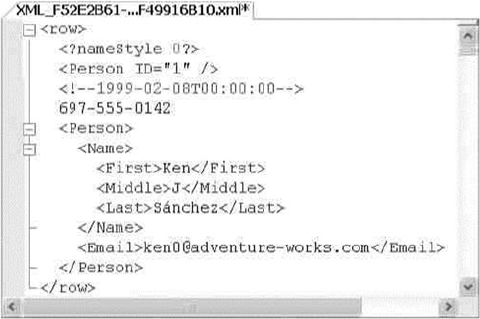

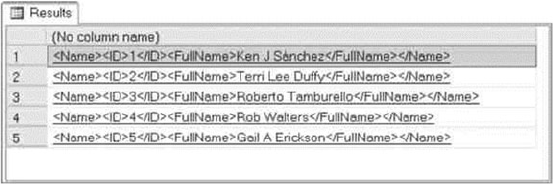

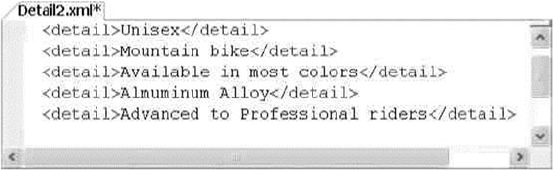

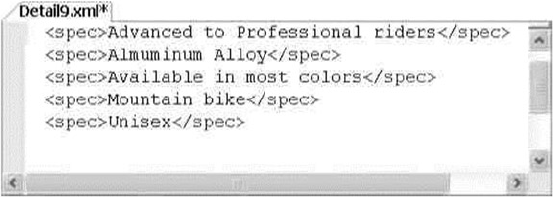

- OPENXML

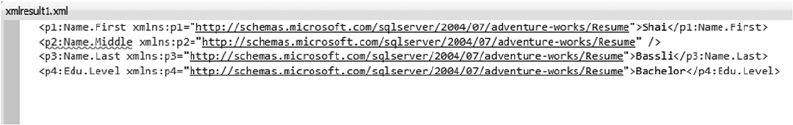

- OPENXML Result Formats

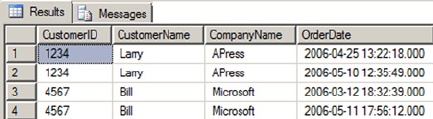

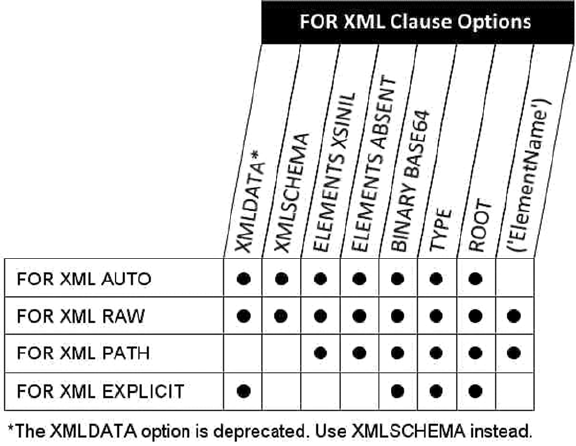

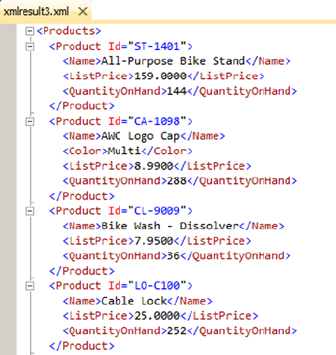

- FOR XML Clause

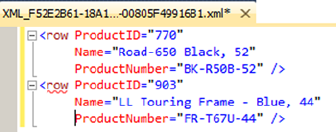

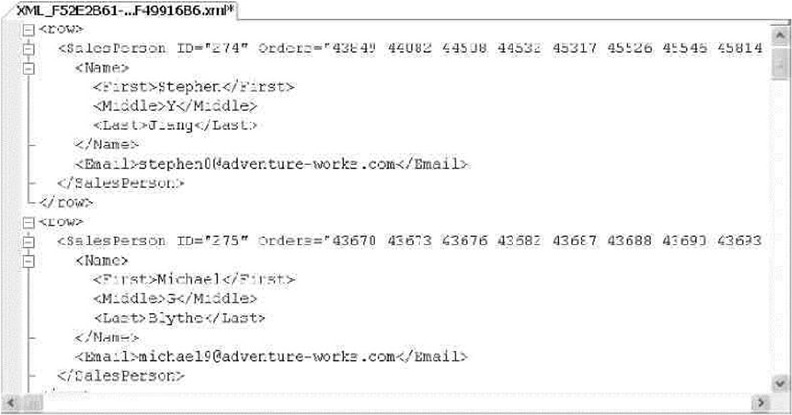

- FOR XML RAW

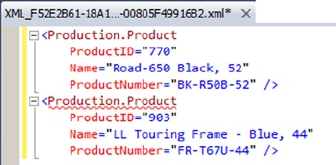

- FOR XML AUTO

- FOR XML EXPLICIT

- FOR XML PATH

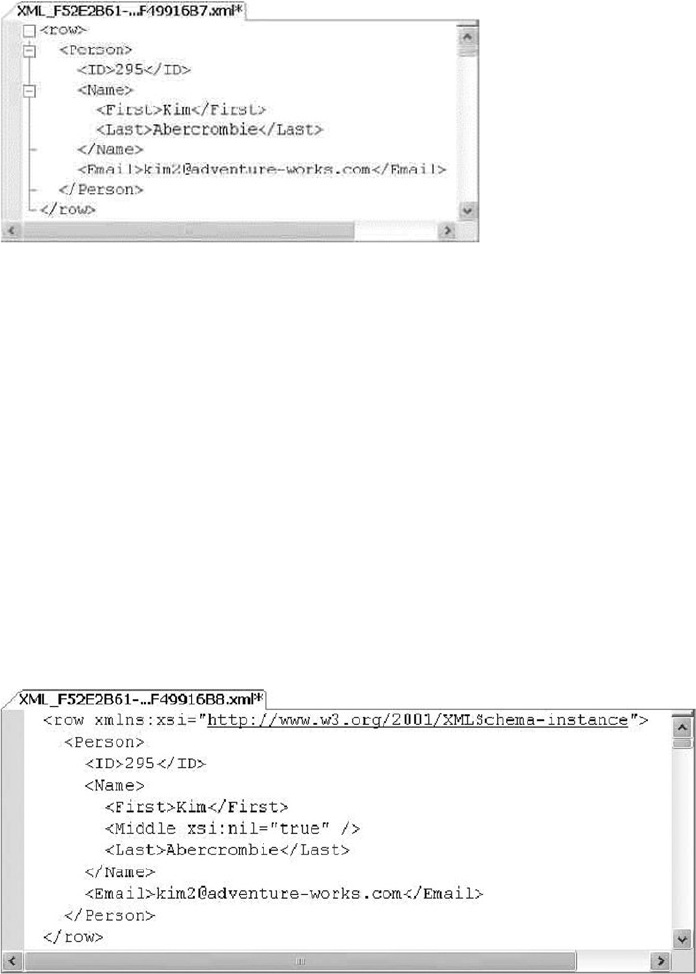

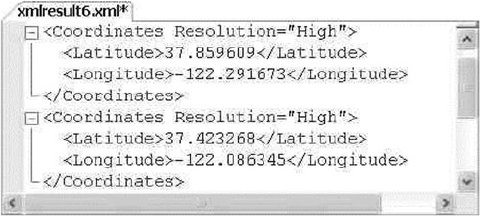

- The xml Data Type

- Untyped xml

- Typed xml

- The xml Data Type Methods

- The query Method

- The value Method

- The exist Method

- The nodes Method

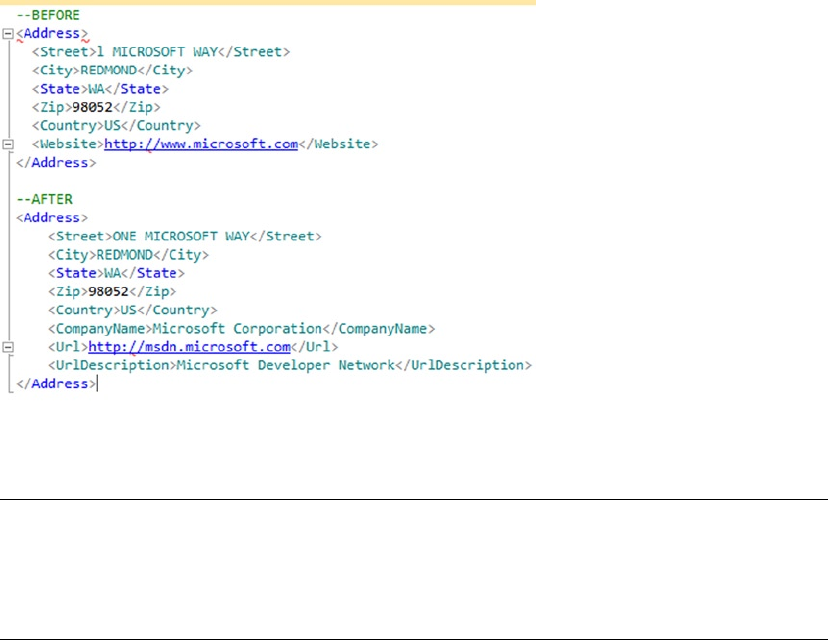

- The modify Method

- XML Indexes

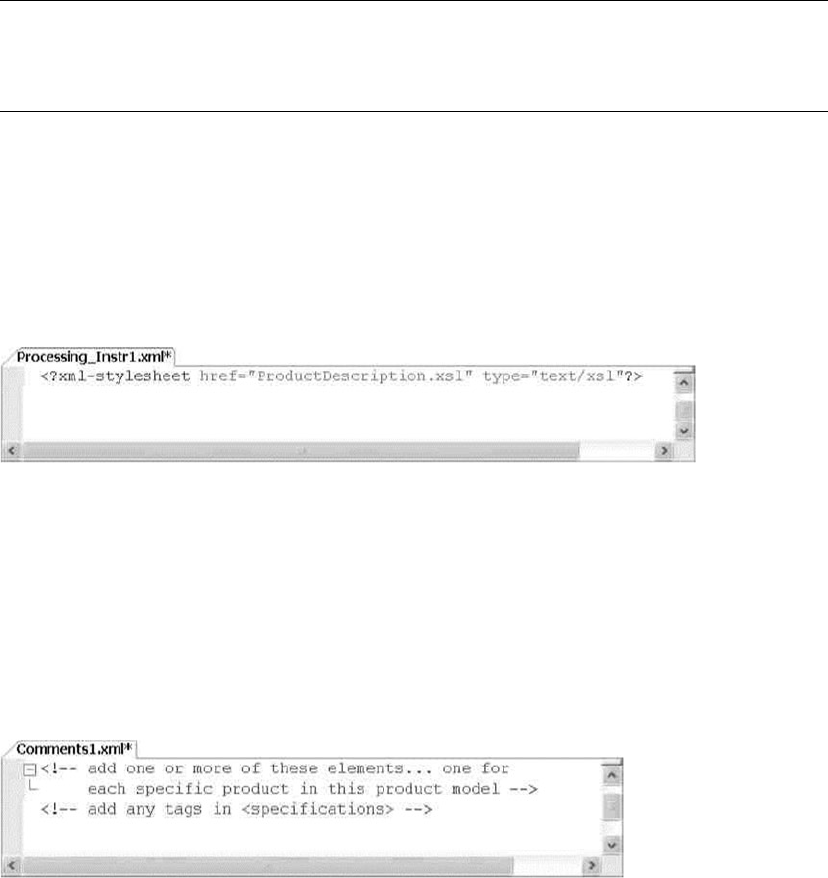

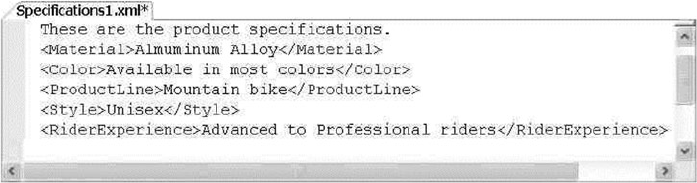

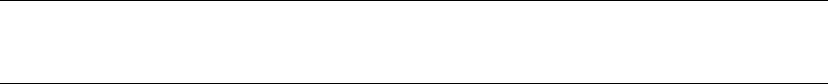

- XSL Transformations

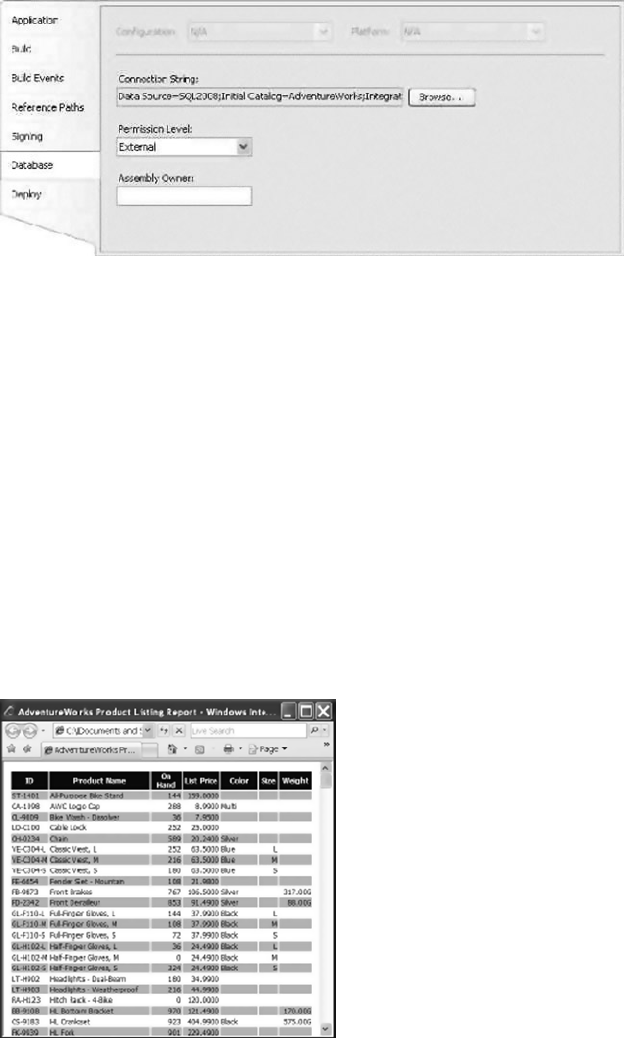

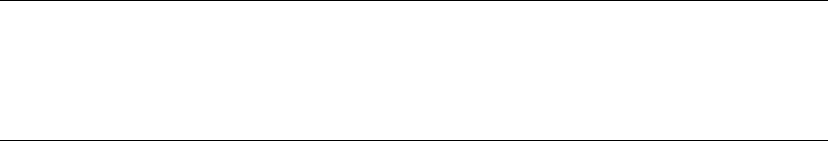

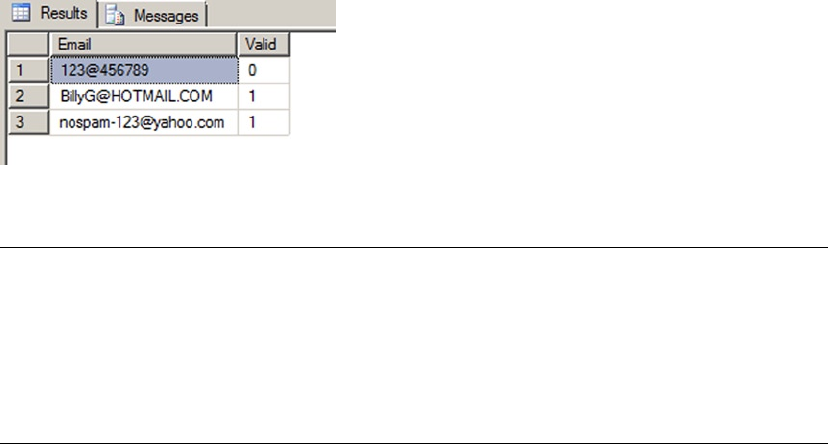

- SQL CLR Security Settings

- Summary

- Chapter 13: XQuery and XPath

- XPath and FOR XML PATH

- XPath Attributes

- Columns without Names and Wildcards

- Element Grouping

- The data Function

- Node Tests and Functions

- XPath and NULL

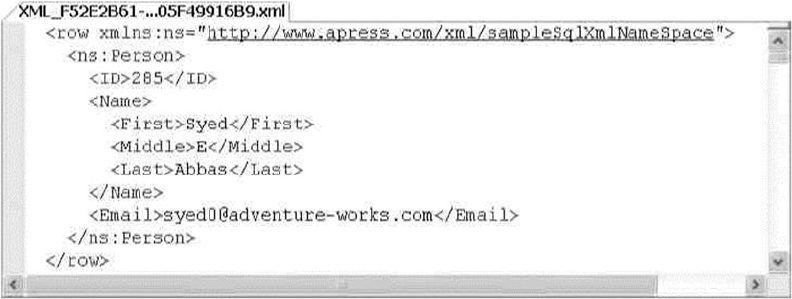

- The WITH XMLNAMESPACES Clause

- Node Tests

- XQuery and the xml Data Type

- Expressions and Sequences

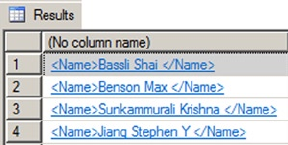

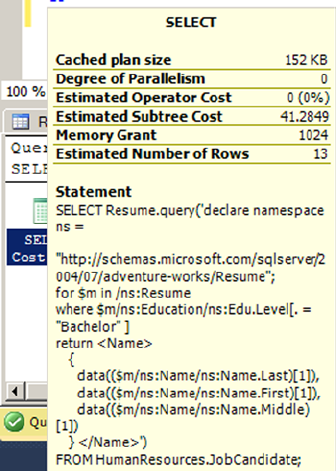

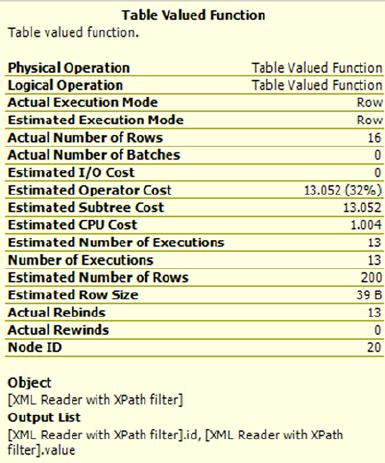

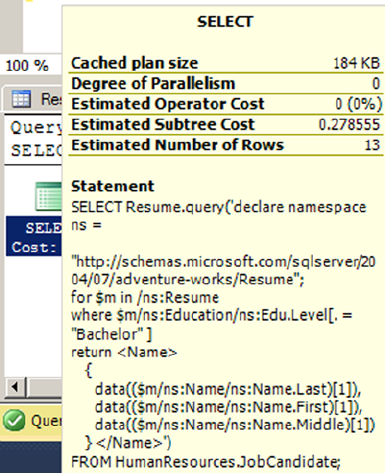

- The query Method

- Location Paths

- Node Tests

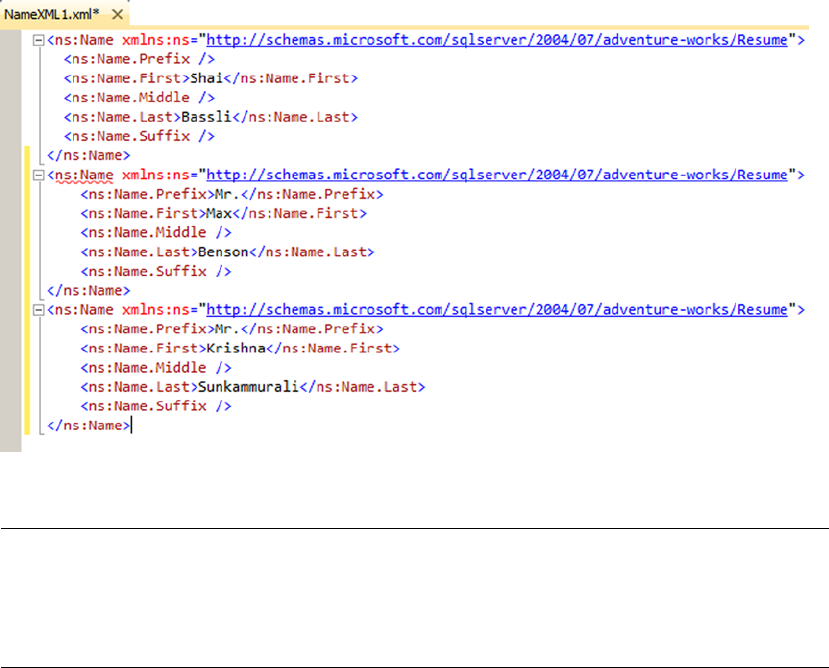

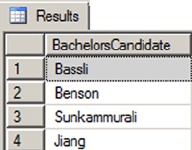

- Namespaces

- Axis Specifiers

- Dynamic XML Construction

- XQuery Comments

- Data Types

- Predicates

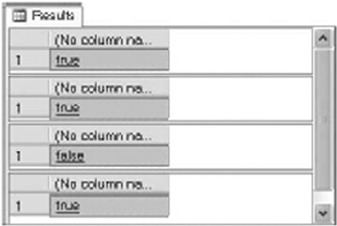

- Value Comparison Operators

- General Comparison Operators

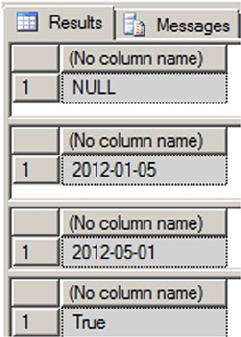

- Xquery Date Format

- Node Comparisons

- Conditional Expressions (if...then...else)

- Arithmetic Expressions

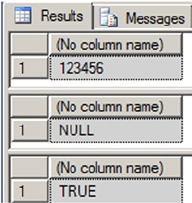

- Integer Division in XQuery

- XQuery Functions

- Constructors and Casting

- FLWOR Expressions

- The for and return Keywords

- The where Keyword

- The order by Keywords

- The let Keyword

- UTF-16 Support

- Summary

- XPath and FOR XML PATH

- Chapter 14: Catalog Views and Dynamic aent Views

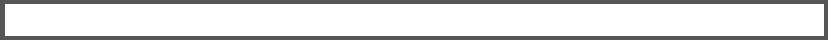

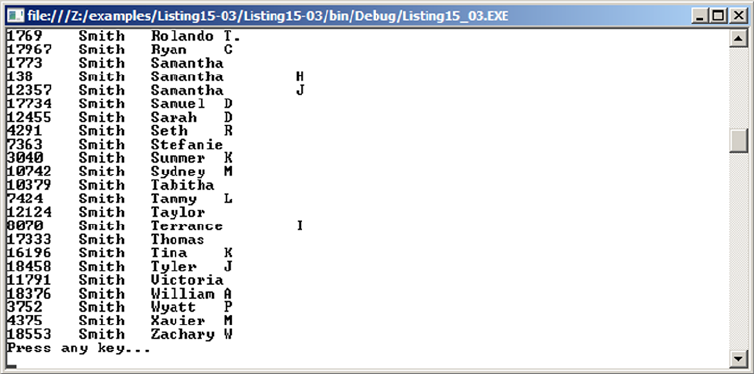

- Chapter 15: .NET Client Programming

- ADO.NET

- The .NET SQL Client

- Connected Data Access

- Disconnected Datasets

- Parameterized Queries

- Nonquery, Scalar, and XML Querying

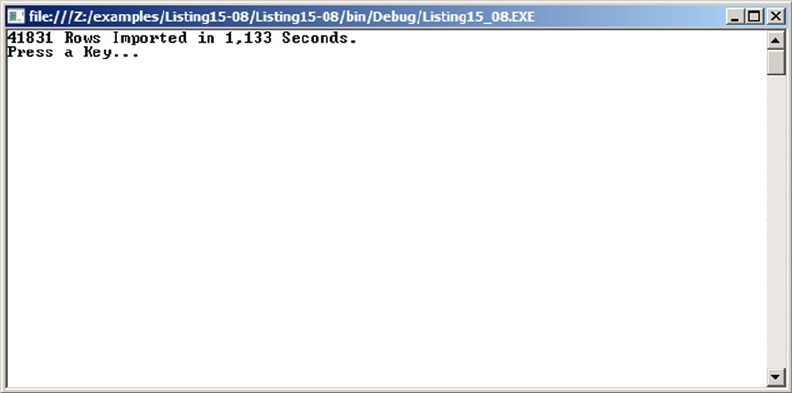

- SqIBulkCopy

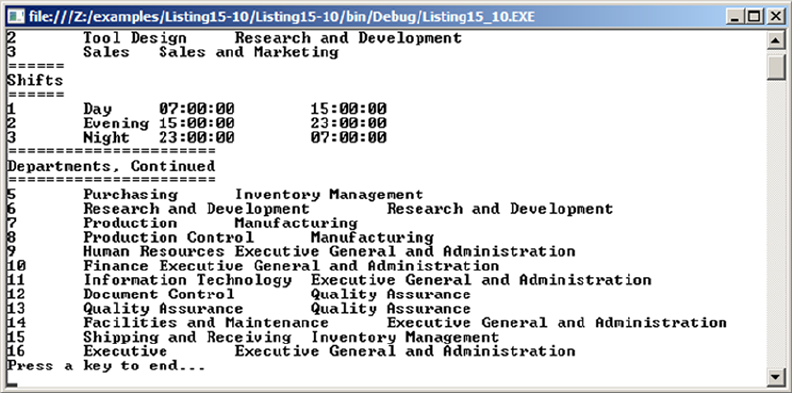

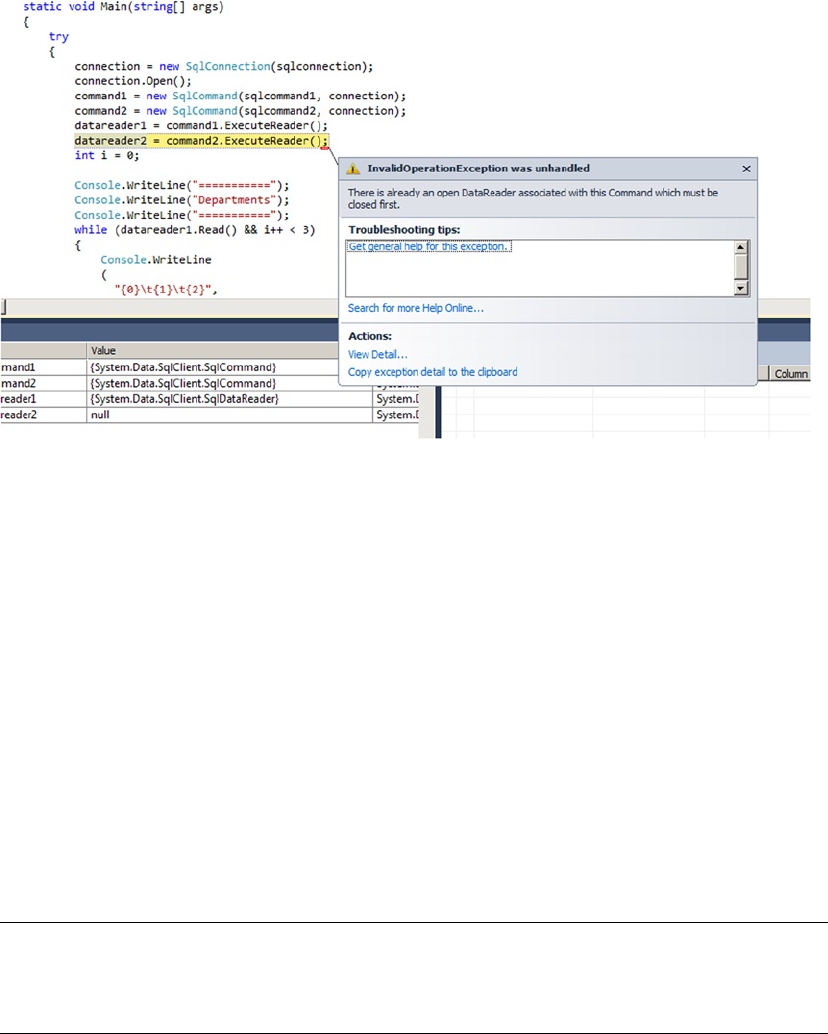

- Multiple Active Result Sets

- LINQ to SQL

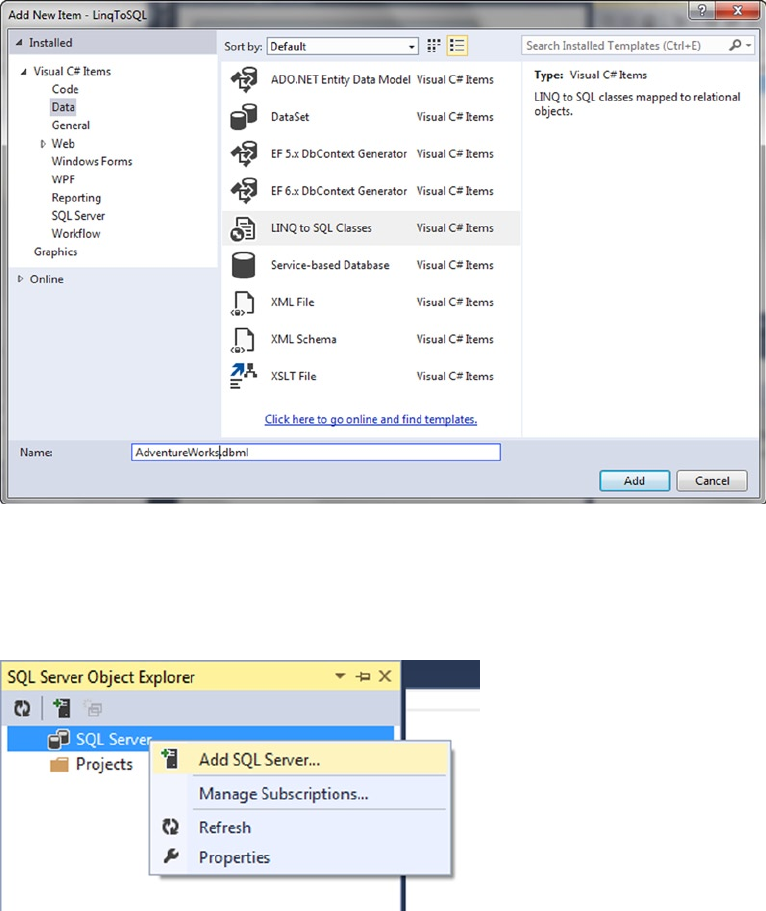

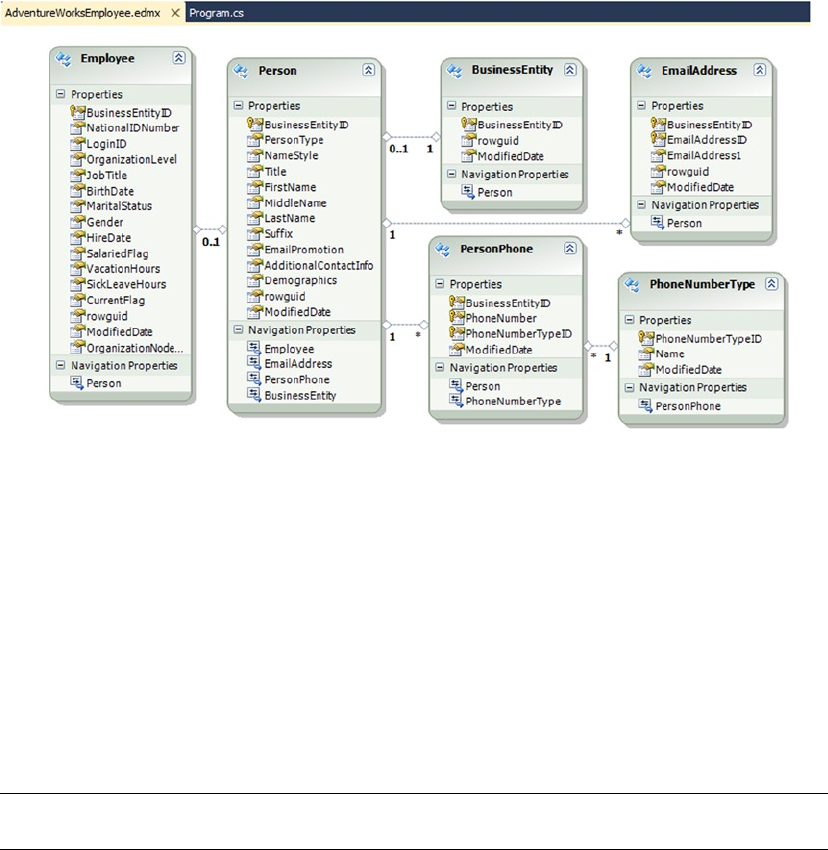

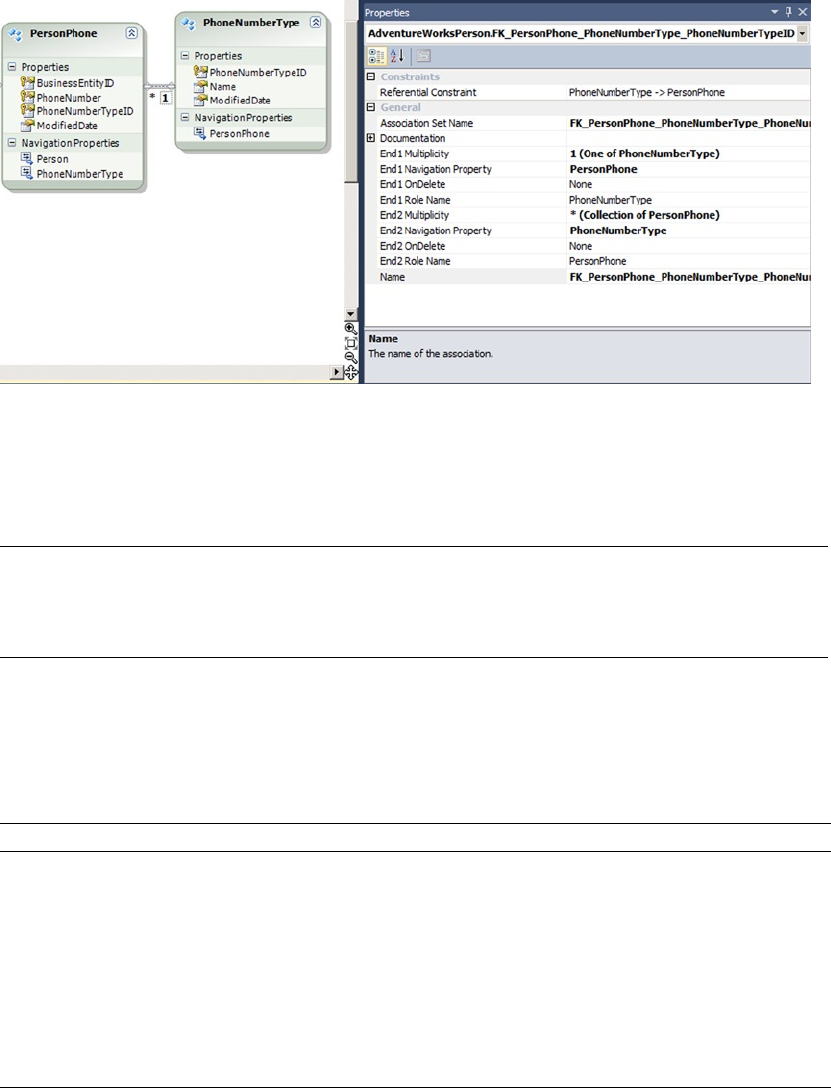

- Using the Designer

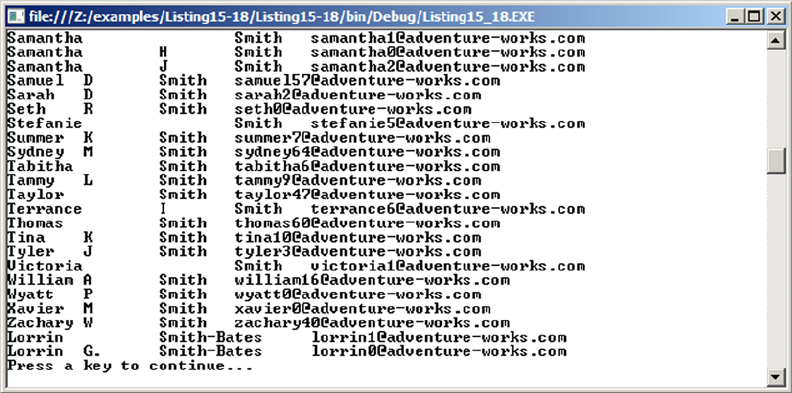

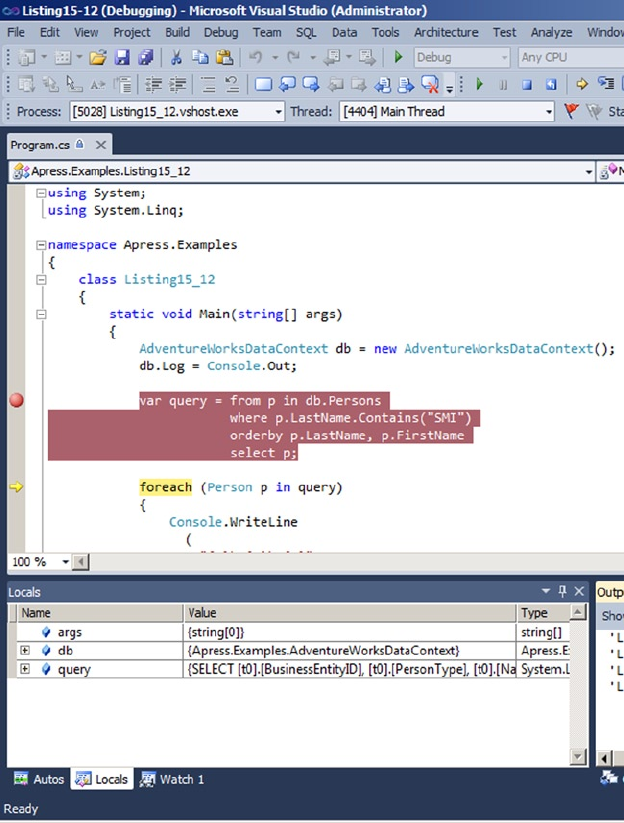

- Querying with LINQ to SQL

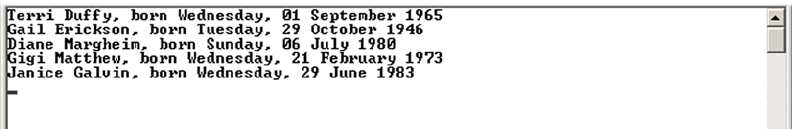

- Basic LINQ to SQL Querying

- Deferred Query Execution

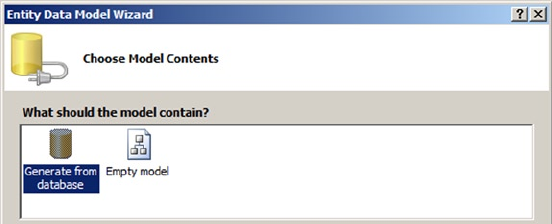

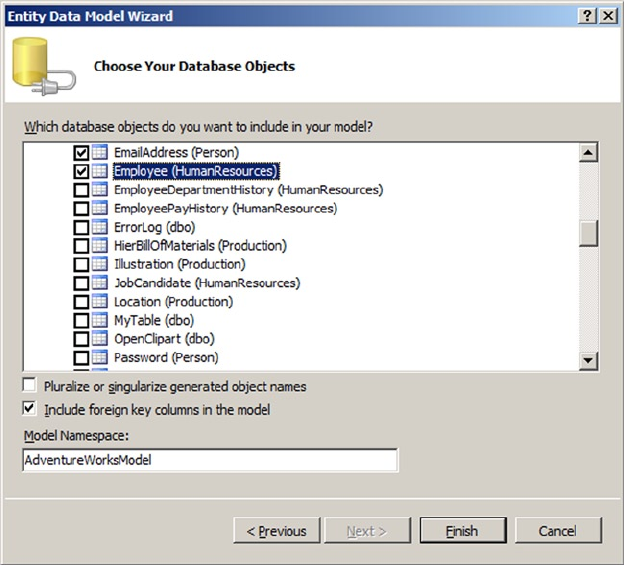

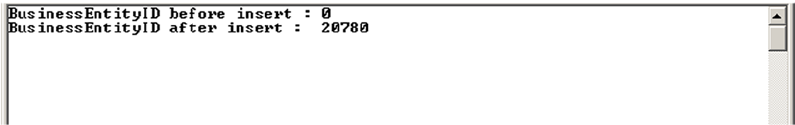

- From LINQ to Entity Framework

- Querying Entities

- Summary

- Chapter 16: CLR Integration Programming

- Chapter 17: Data Services

- Chapter 18: Error Handling and Dynamic SQL

- Chapter 19: Performance Tuning

- Appendix A: Exercise Answers

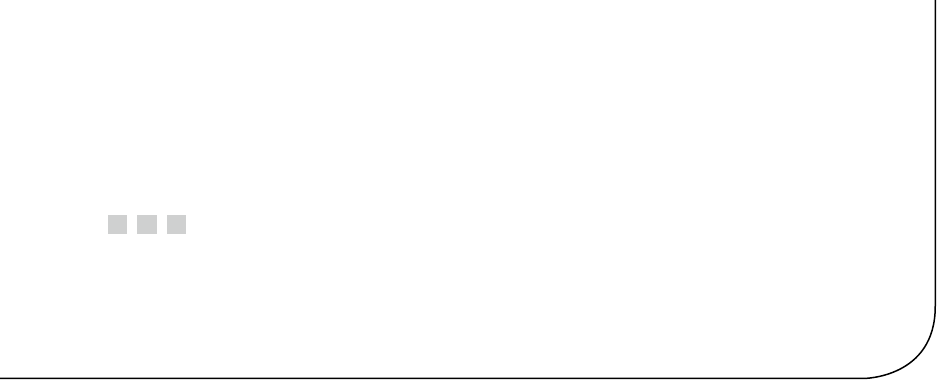

- Appendix B: XQuery Data Types

- Appendix C: Glossary

- ACID

- adjacency list model

- ADO.NET Data Services

- anchor query

- application programming interface (API)

- assembly

- asymmetric encryption

- atomic, list, and union data types

- axis

- Bulk Copy Program (BCP)

- catalog view

- certificate

- check constraint

- closed-world assumption (CWA)

- clustered index

- comment

- computed constructor

- content expression

- context item expression

- context node

- database encryption key

- database master key

- data domain

- data page

- datum

- empty sequence

- entity data model (EDM)

- Extended Events (XEvents)

- extensible key management (EKM)

- extent

- Extract, Transform, Load (ETL)

- facet

- filter expression

- FLWOR expression

- foreign key constraint

- full-text catalog

- full-text index

- full-text search (FTS)

- Functions and Operators (F&O)

- general comparison

- Geography Markup Language (GML)

- grouping set

- hash

- heap

- heterogeneous sequence

- homogenous sequence

- indirect recursion

- inflectional forms

- initialization vector (IV)

- Language Integrated Query (LINQ)

- location path

- logon trigger

- materialized path model

- Multiple Active Result Sets (MARS)

- nested sets model

- node

- node comparison

- node test

- nonclustered index

- object-relational mapping (O/RM)

- open-world assumption (OWA)

- optional occurrence indicator

- parameterization

- path expression

- predicate

- predicate truth value

- primary expression

- query plan

- recompilation

- recursion

- row constructor

- scalar function

- searched CASE expression

- sequence

- server certificate

- service master key (SMK)

- shredding

- simple CASE expression

- SOAP

- spatial data

- spatial index

- SQL Server Data Tools

- SQL injection

- step

- table type

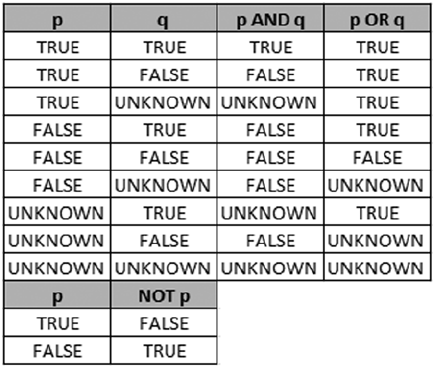

- three-valued logic (3VL)

- transparent data encryption (TDE)

- untyped XML

- user-defined aggregate (UDA)

- user-defined type (UDT)

- value comparison

- well-formed XML

- well-known text (WKT)

- windowing functions

- World Wide Web Consortium (W3C)

- XML

- XML schema

- XPath

- XQuery

- XQuery/XPath Data Model (XDM)

- XSL

- XSLT

- Appendix D: SQLCMD Quick Reference

- Index

Cebollero

Coles

Natarajan

FOURTH

EDITION

Shelve in

Databases/MS SQL Server

User level:

Intermediate–Advanced

www.apress.com

SOURCE CODE ONLINE

RELATED

BOOKS FOR PROFESSIONALS BY PROFESSIONALS®

Pro T-SQL Programmer’s Guide

Pro T–SQL Programmer’s Guide is your guide to making the best use of the

powerful, Transact-SQL programming language that is built into Microsoft SQL

Server’s database engine. This edition is updated to cover the new, in-memory

features that are part of SQL Server 2014. Discussing new and existing features,

the book takes you on an expert guided tour of Transact–SQL functionality. Fully

functioning examples and downloadable source code bring technically accurate

and engaging treatment of Transact–SQL into your own hands. Step–by–step

explanations ensure clarity, and an advocacy of best–practices will steer you down

the road to success.

Transact–SQL is the language developers and DBAs use to interact with SQL

Server. It’s used for everything from querying data, to writing stored procedures, to

managing the database. Support for in-memory stored procedures running queries

against in-memory tables is new in the language and gets coverage in this edition.

Also covered are must-know features such as window functions and data paging

that help in writing fast-performing database queries. Developers and DBAs alike

can benefit from the expressive power of T-SQL, and Pro T-SQL Programmer’s

Guide is your roadmap to success in applying this increasingly important database

language to everyday business and technical tasks.

9781484 201466

55999

ISBN 978-1-4842-0146-6

For your convenience Apress has placed some of the front

matter material after the index. Please use the Bookmarks

and Contents at a Glance links to access them.

iii

Contents at a Glance

About the Authors. ................................................................................................xxiii

About the Technical Reviewer. ...............................................................................xxv

Acknowledgments ...............................................................................................xxvii

Introduction ..........................................................................................................xxix

Chapter 1: Foundations of T-SQL ■ ........................................................................... 1

Chapter 2: Tools of the Trade ■ ............................................................................... 19

Chapter 3: Procedural Code ■ ................................................................................. 47

Chapter 4: User-Defined Functions ■ ...................................................................... 79

Chapter 5: Stored Procedures ■ ........................................................................... 111

Chapter 6: In-Memory Programming ■ ................................................................. 153

Chapter 7: Triggers ■ ............................................................................................ 177

Chapter 8: Encryption ■ ........................................................................................ 207

Chapter 9: Common Table Expressions and Windowing Functions ■ ................... 233

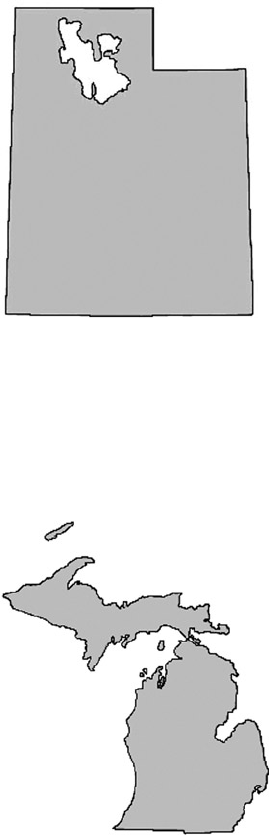

Chapter 10: Data Types and Advanced Data Types ■ ............................................ 269

Chapter 11: Full-Text Search ■ ............................................................................. 317

Chapter 12: XML ■ ................................................................................................ 347

Chapter 13: XQuery and XPath ■ .......................................................................... 387

Chapter 14: Catalog Views and Dynamic aent Views ■ ........................................ 433

Chapter 15: .NET Client Programming ■ ............................................................... 461

Chapter 16: CLR Integration Programming ■........................................................ 511

■ CONTENTS AT A GLANCE

iv

Chapter 17: Data Services ■ ................................................................................. 559

Chapter 18: Error Handling and Dynamic SQL ■ ................................................... 589

Chapter 19: Performance Tuning ■ ....................................................................... 613

Appendix A: Exercise Answers ■ .......................................................................... 653

Appendix B: XQuery Data Types ■ ......................................................................... 663

Appendix C: Glossary ■ ......................................................................................... 669

Appendix D: SQLCMD Quick Reference ■ .............................................................. 683

Index ..................................................................................................................... 693

xxix

Introduction

In the mid-1990s, when Microsoft parted ways with Sybase in their conjoint development of SQL Server, it

was an entirely dierent product. When SQL Server 6.5 was released in 1996, it was starting to gain credibility

as an enterprise-class database server. It still had rough management tools, only core functionalities, and

some limitations that are forgotten today, like xed-size devices and the inability to drop table columns. It

functioned as a rudimentary database server: storing and retrieving data for client applications. ere was

already plenty for anyone new to the relational database world to learn. Newcomers had to understand

many concepts, such as foreign keys, stored procedures, triggers, and the dedicated language, T-SQL

(which could be a baing experience—writing SELECT queries sometimes involves a lot of head-scratching).

Even when developers mastered all that, they still had to keep up with the additions Microsoft made to

the database engine with each new version. Some of the changes were not for the faint of heart, like .NET

database modules, support for XML and the XQuery language, and a full implementation of symmetric and

asymmetric encryption. ese additions are today core components of SQL Server.

Because a relational database management server (RDBMS) like SQL Server is one of the most

important elements of the IT environment, you need to make the best of it, which implies a good

understanding of its more advanced features. We have designed this book with the goal of helping T-SQL

developers get the absolute most out of the development features and functionality in SQL Server 2014.

We cover all of what’s needed to master T-SQL development, from using management and development

tools to performance tuning. We hope you enjoy the book and that it helps you to become

a pro SQL Server 2014 developer.

Whom This Book Is For

is book is intended for SQL Server developers who need to port code from prior versions of SQL Server,

and those who want to get the most out of database development on the 2014 release. You should have a

working knowledge of SQL, preferably T-SQL on SQL Server 2005 or later, because most of the examples

in this book are written in T-SQL. e book covers some of the basics of T-SQL, including introductory

concepts like data domain and three-valued logic, but this isn’t a beginner’s book. We don’t discuss database

design, database architecture, normalization, and the most basic SQL constructs in any detail. Apress oers

a beginner’s guide to T-SQL 2012 that covers more basic SQL constructs.

We focus here on advanced SQL Server 2014 functionalities, and so we assume you have a basic

understanding of SQL statements like INSERT and SELECT. A working knowledge of C# and the .NET

Framework is also useful (but not required), because two chapters are dedicated to .NET client

programming and .NET database integration.

Some examples in the book are written in C#. When C# sample code is provided, it’s explained in detail,

so an in-depth knowledge of the .NET Framework class library isn’t required.

■ INTRODUCTION

xxx

How This Book Is Structured

is book was written to address the needs of four types of readers:

SQL developers who are coming from other platforms to SQL Server 2014•

SQL developers who are moving from prior versions of SQL Server to •

SQL Server 2014

SQL developers who have a working knowledge of basic T-SQL programming and •

want to learn about advanced features

Database administrators and non-developers who need a working knowledge of •

T-SQL functionality to eectively support SQL Server 2014 instances

For all types of readers, this book is designed to act as a tutorial that describes and demonstrates T-SQL

features with working examples, and as a reference for quickly locating details about specic features. e

following sections provide a chapter-by-chapter overview.

Chapter 1

Chapter 1 starts this book by putting SQL Server 2014’s implementation of T-SQL in context, including a

short history, a discussion of the basics, and an overview of T-SQL coding best practices.

Chapter 2

Chapter 2 gives an overview of the tools that are packaged with SQL Server and available to SQL Server

developers. Tools discussed include SQL Server Management Studio (SSMS), SQLCMD, SQL Server Data

Tools (SSDT), and SQL Proler, among others.

Chapter 3

Chapter 3 introduces T-SQL procedural code, including control-of-ow statements like IF...THEN and

WHILE. is chapter also discusses CASE expressions and CASE-derived functions, and provides an

in-depth discussion of SQL three-valued logic.

Chapter 4

Chapter 4 discusses the various types of T-SQL user-dened functions available to encapsulate T-SQL logic

on the server. We talk about all forms of T-SQL–based user-dened functions, including scalar user-dened

functions, inline table-valued functions, and multistatement table-valued functions.

Chapter 5

Chapter 5 covers stored procedures, which allow you to create server-side T-SQL subroutines.

In addition to describing how to create and execute stored procedures on SQL Server, we also address an

issue that is thorny for some: why you might want to use stored procedures.

■ INTRODUCTION

xxxi

Chapter 6

Chapter 6 covers the latest features available in SQL Server 2014: In-Memory OLTP tables.

e In-Memory features provide the capability to dramatically increase the database performance of an

OLTP or data-warehouse instance. With the new features also come some limitations.

Chapter 7

Chapter 7 introduces all three types of SQL Server triggers: classic DML triggers, which re in response

to DML statements; DDL triggers, which re in response to server and database DDL events; and logon

triggers, which re in response to server LOGON events.

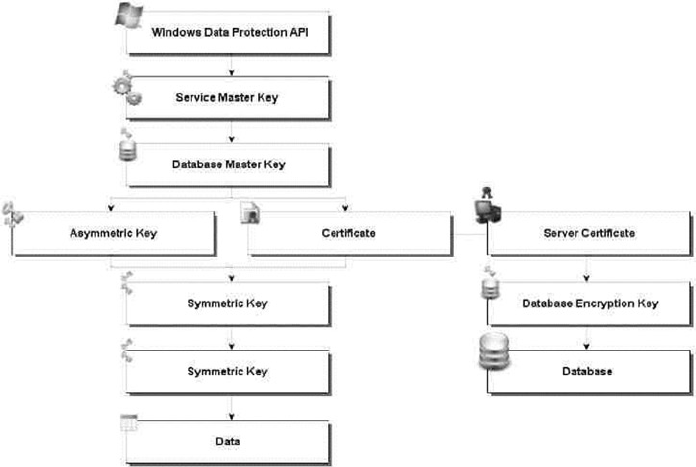

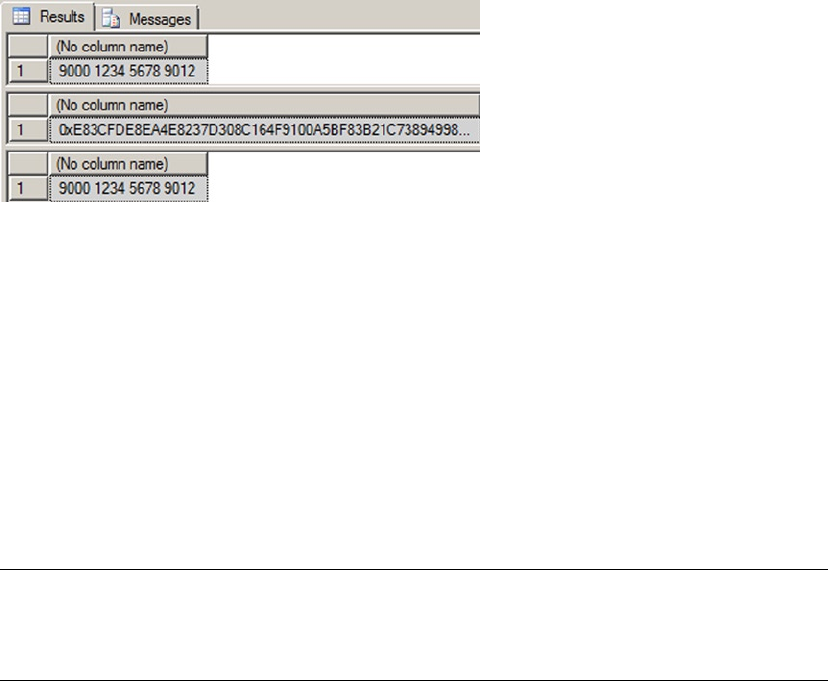

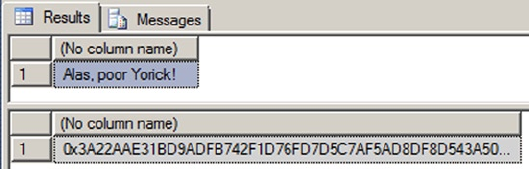

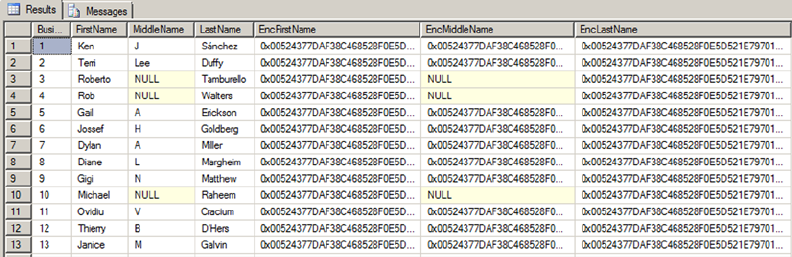

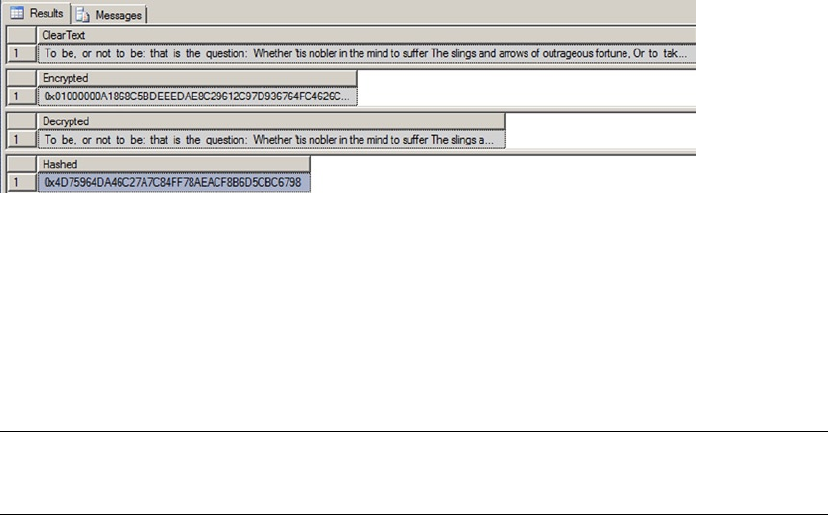

Chapter 8

Chapter 8 discusses SQL Server encryption, including the column-level encryption functionality introduced

in SQL Server 2005 and the newer transparent database encryption (TDE) and extensible key management

(EKM) functionality, both introduced in SQL Server 2008.

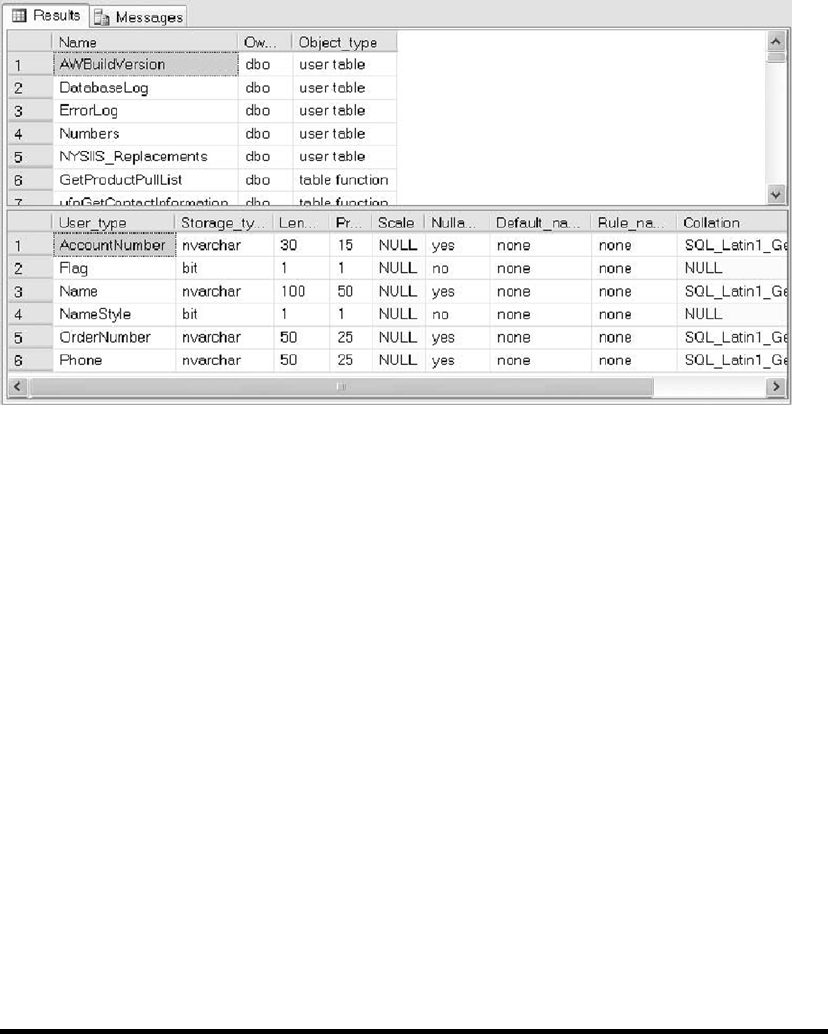

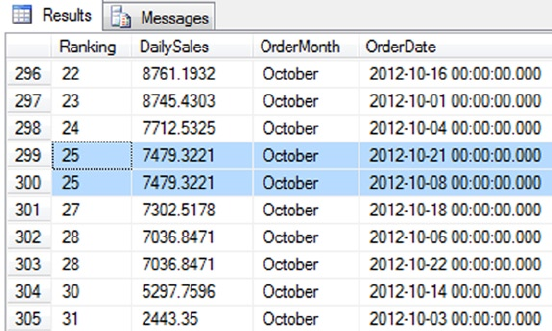

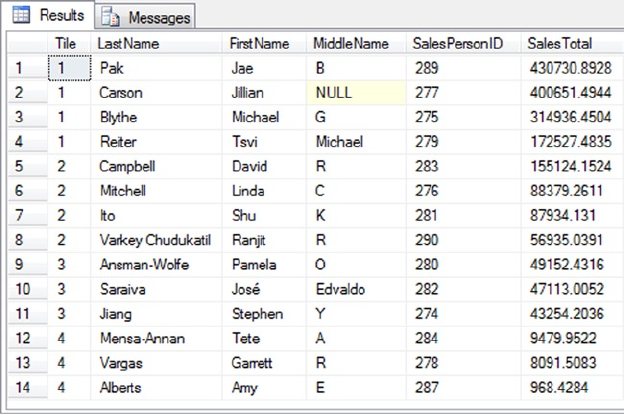

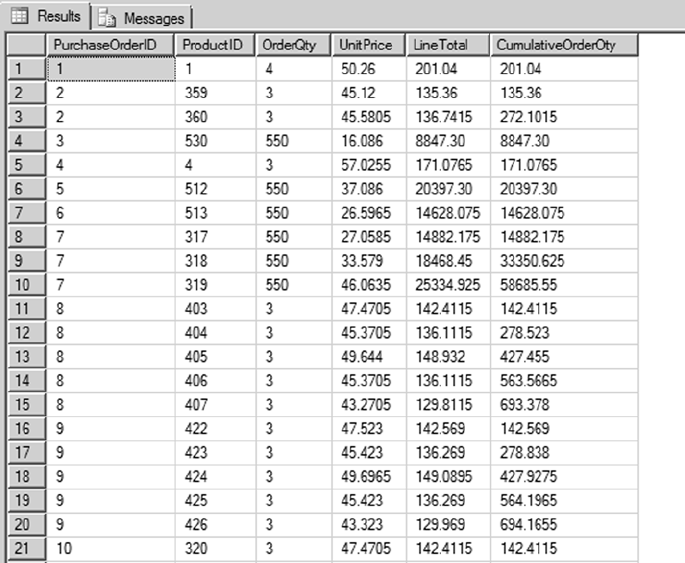

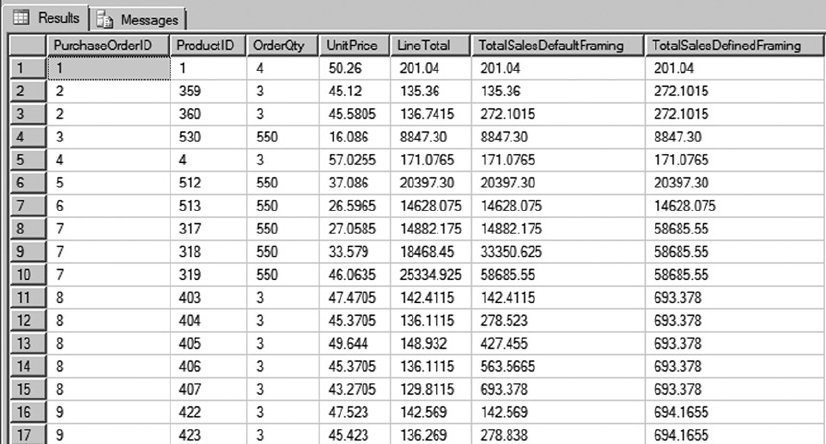

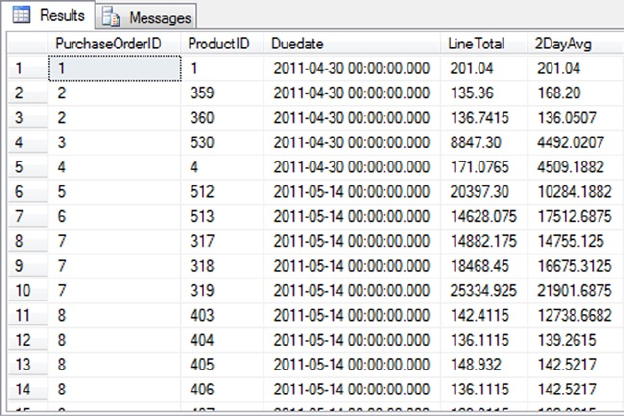

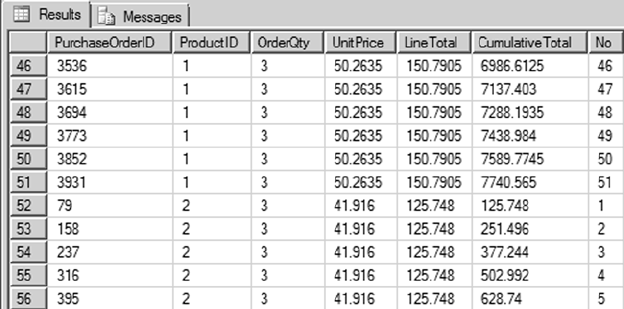

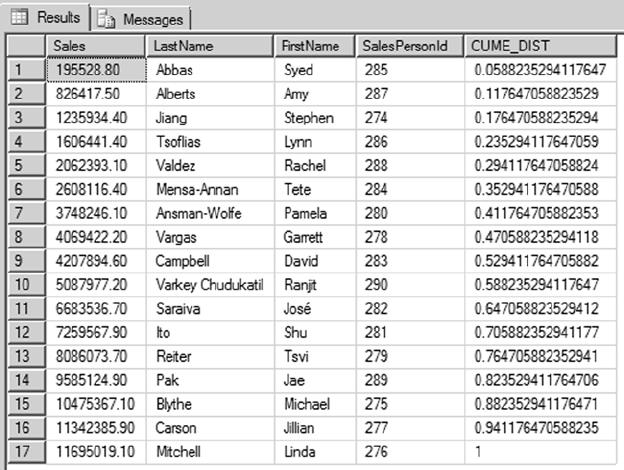

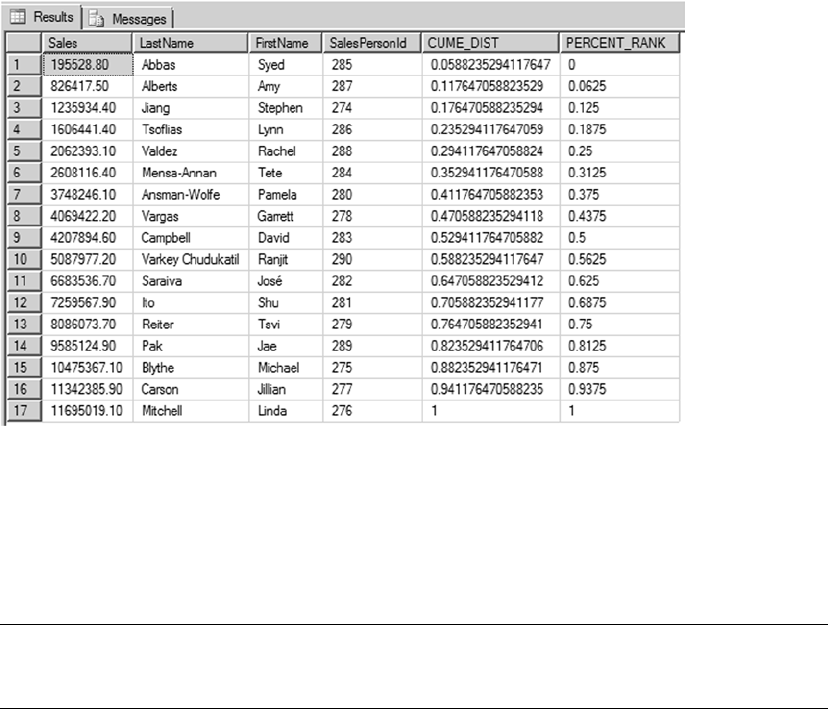

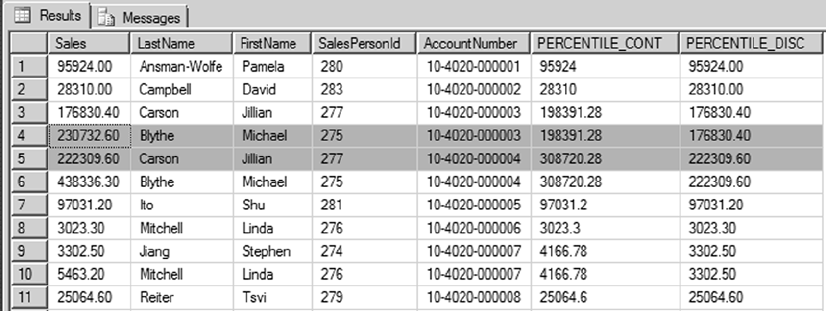

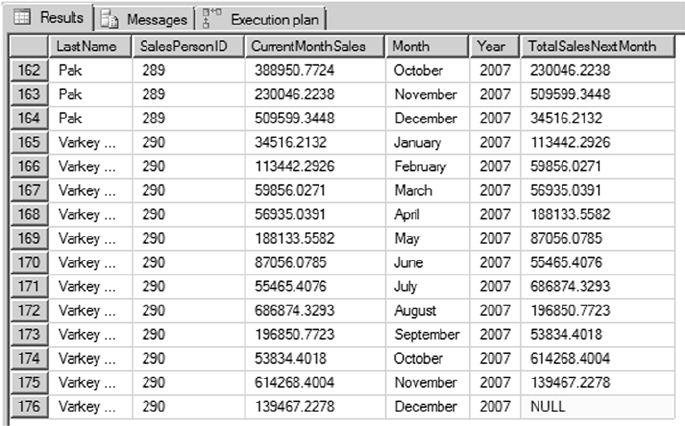

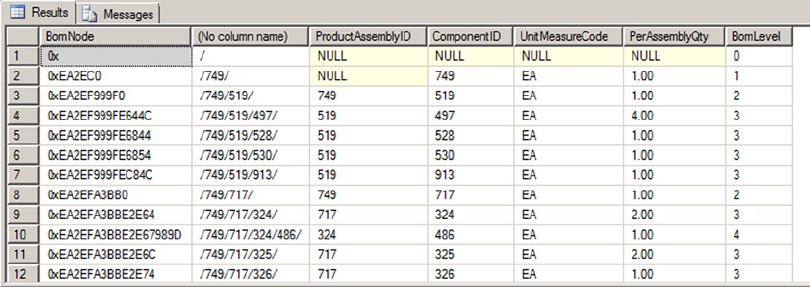

Chapter 9

Chapter 9 dives into the details of common table expressions (CTEs) and windowing functions in

SQL Server 2014, which feature some improvements to the OVER clause to achieve row-level running and

sliding aggregations.

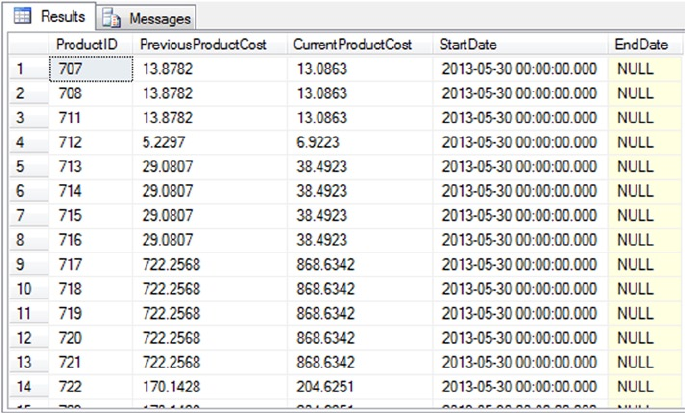

Chapter 10

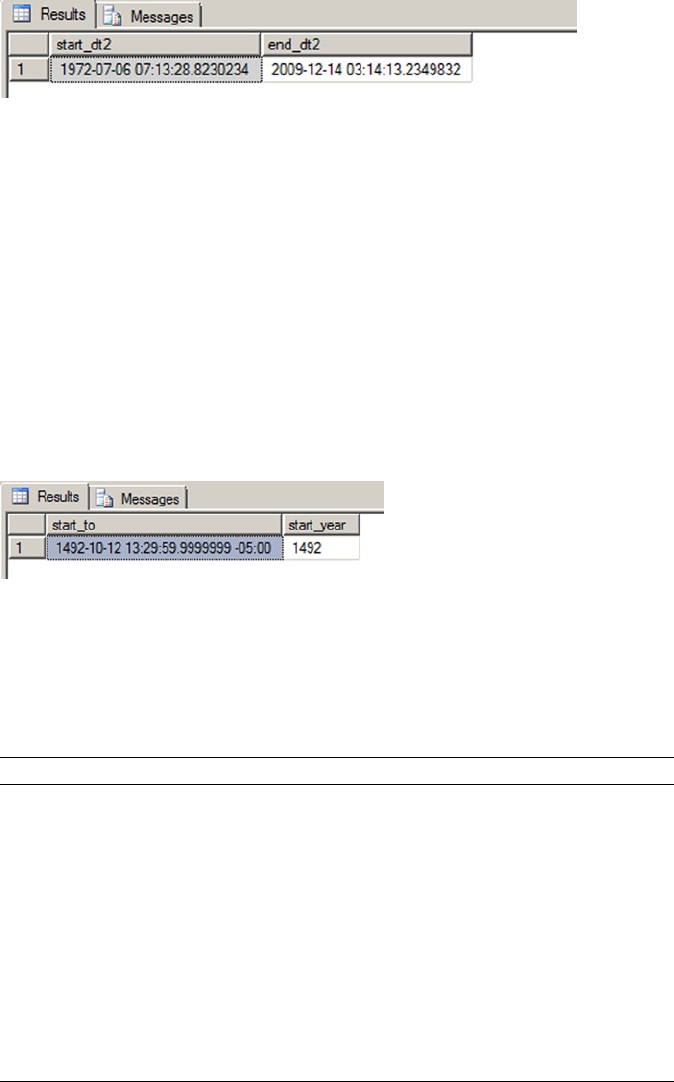

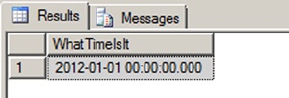

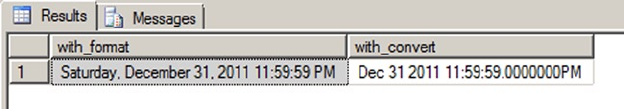

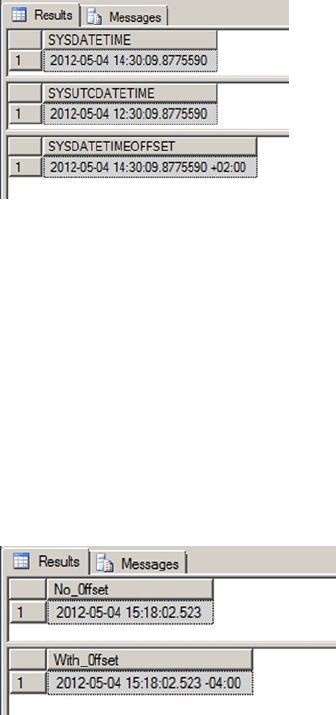

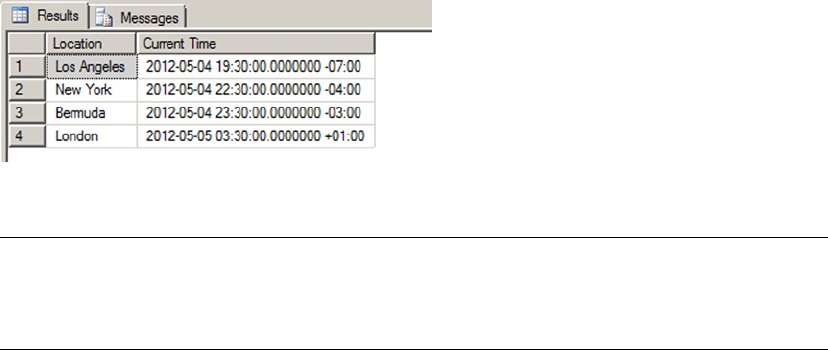

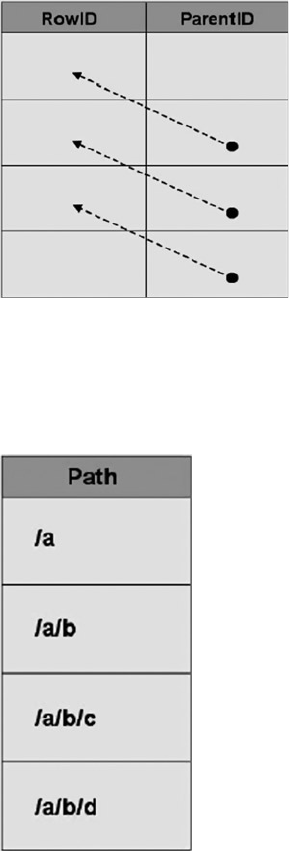

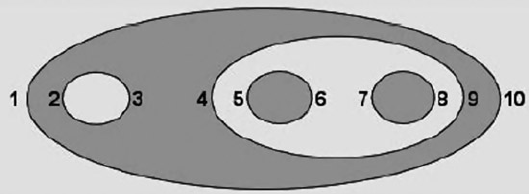

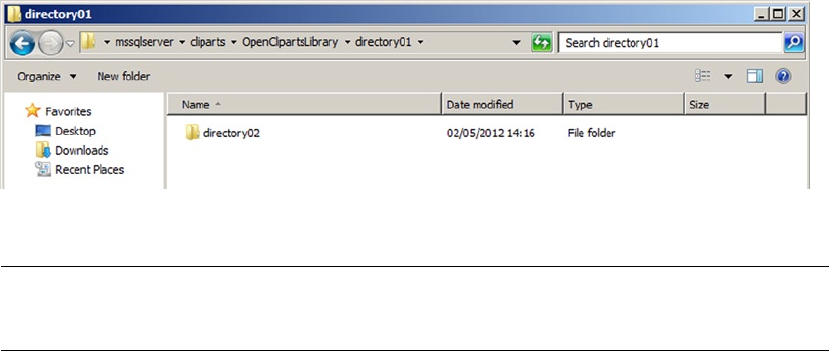

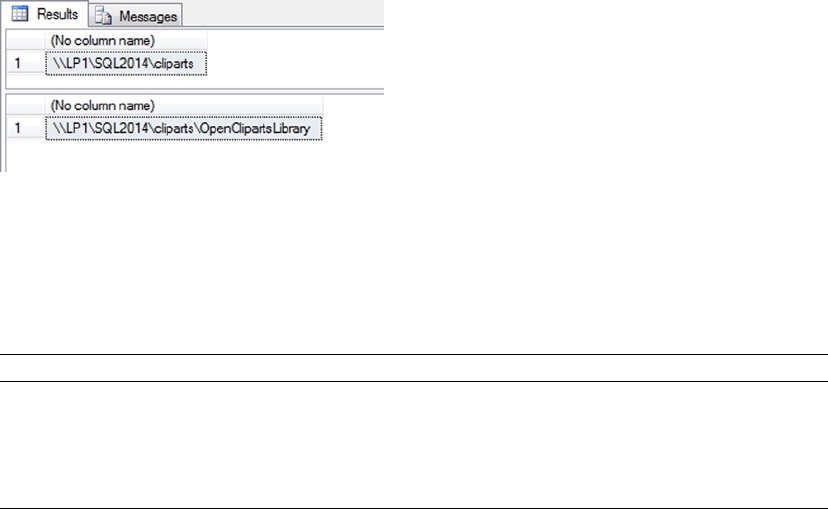

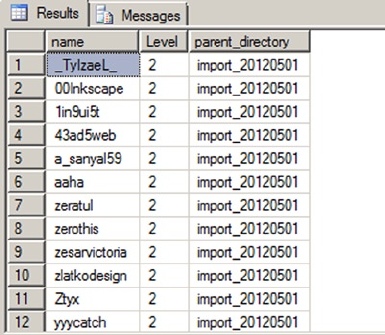

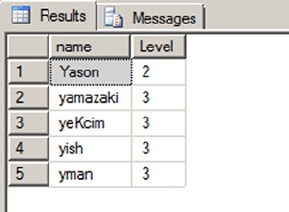

Chapter 10 discusses T-SQL data types: rst some important things to know about basic data types, such

as how to handle date and time in your code, and then advanced data types and features, such as the

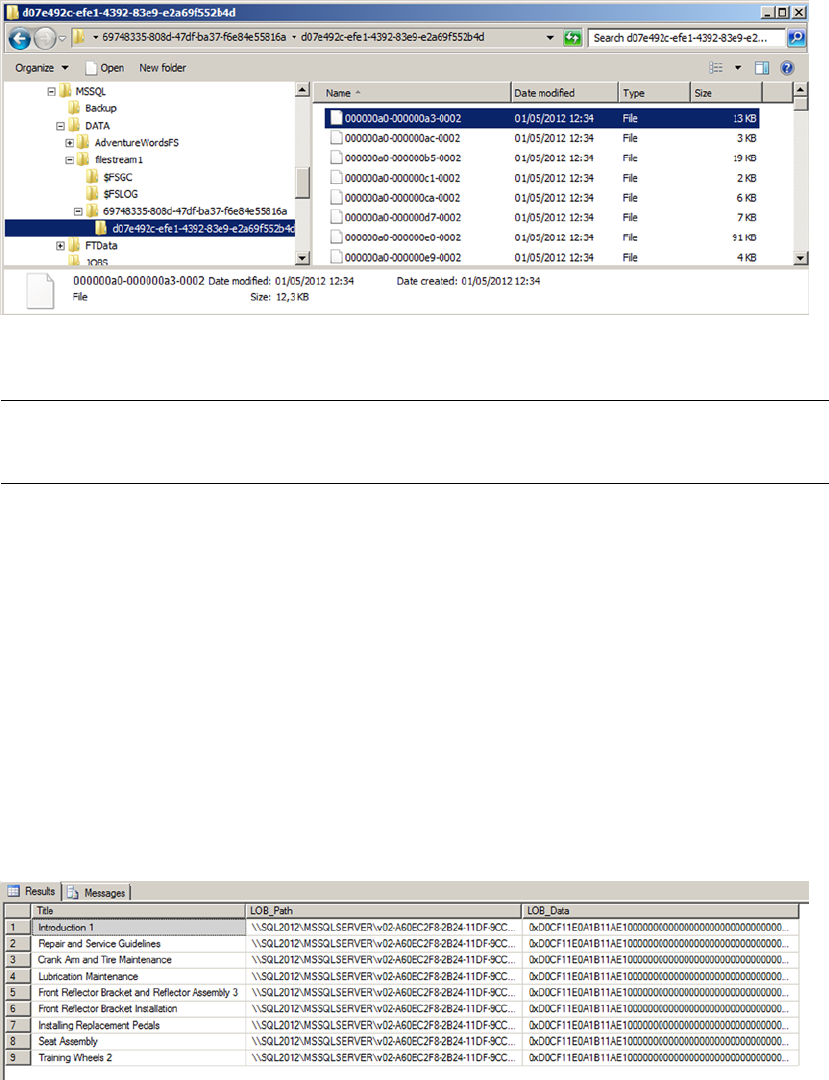

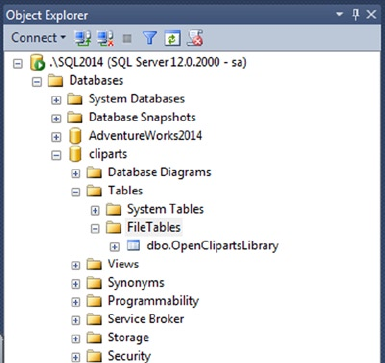

hierarchyid complex type and FILESTREAM and filetable functionality.

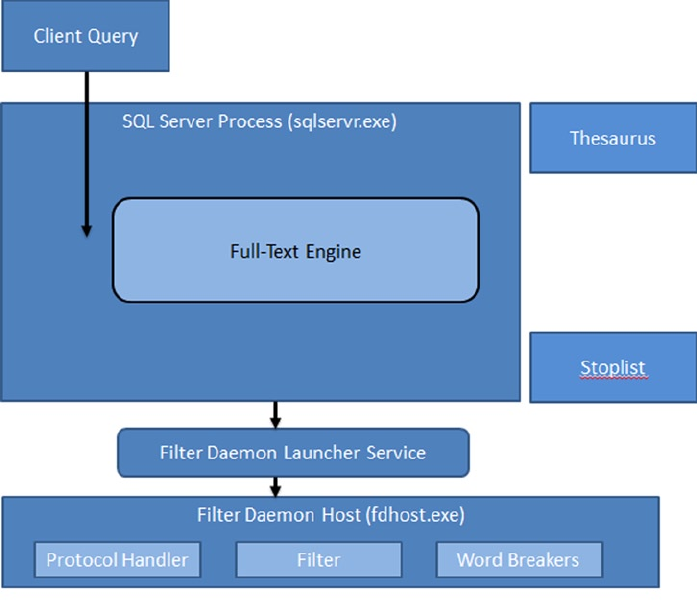

Chapter 11

Chapter 11 covers the full-text search (FTS) feature and advancements made since SQL Server 2008,

including greater integration with the SQL Server query engine and greater transparency by way of

FTS-specic data-management views and functions.

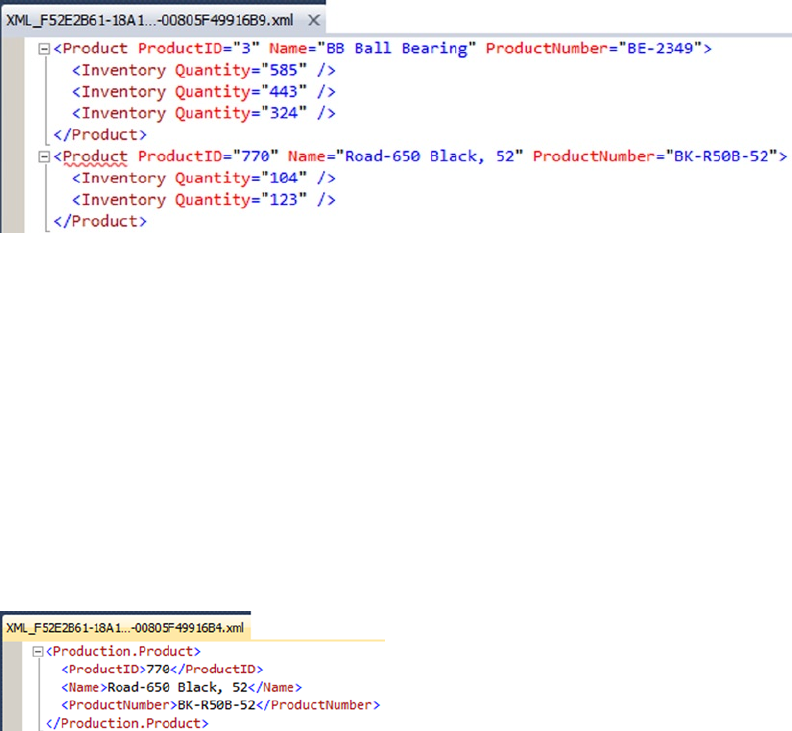

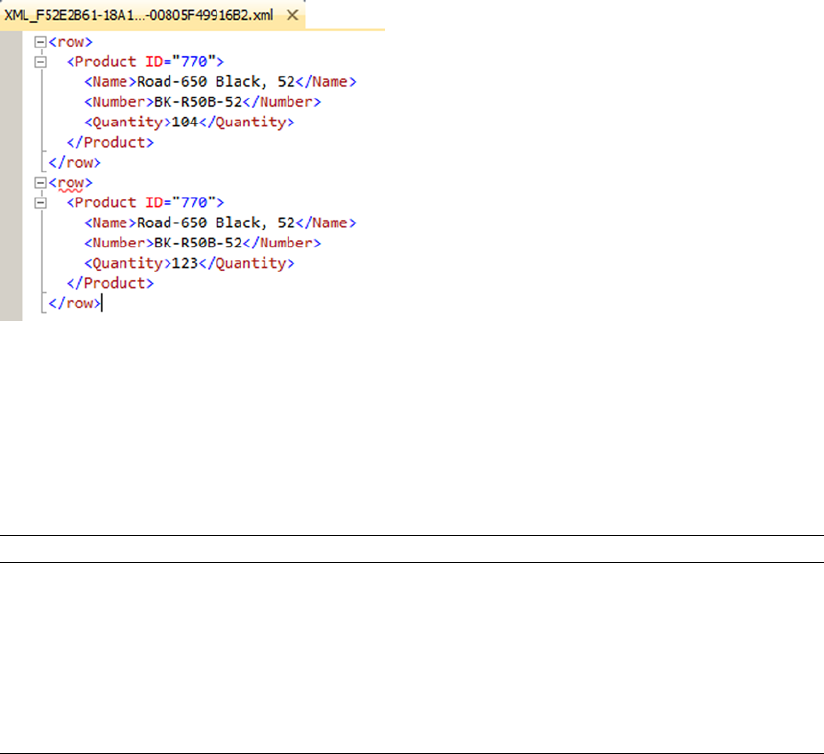

Chapter 12

Chapter 12 provides an in-depth discussion of SQL Server 2014 XML functionality, which carries forward

and improve on the new features introduced in SQL Server 2005. We cover several XML-related topics in this

chapter, including the xml data type and its built-in methods, the FOR XML clause, and XML indexes.

■ INTRODUCTION

xxxii

Chapter 13

Chapter 13 discusses XQuery and XPath support in SQL Server 2014, including improvements on the

XQuery support introduced in SQL Server 2005, such as support for the xml data type in XML DML insert

statements and the let clause in FLWOR expressions.

Chapter 14

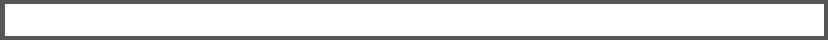

Chapter 14 introduces SQL Server catalog views, which are the preferred tools for retrieving database and

database object metadata. is chapter also discusses dynamic-management views and functions, which

provide access to server and database state information.

Chapter 15

Chapter 15 covers SQL CLR Integration functionality in SQL Server 2014. In this chapter, we discuss and

provide examples of SQL CLR stored procedures, user-dened functions, user-dened types, and

user-dened aggregates.

Chapter 16

Chapter 16 focuses on client-side support for SQL Server, including ADO.NET-based connectivity and the

newest Microsoft object-relational mapping (ORM) technology, Entity Framework 4.

Chapter 17

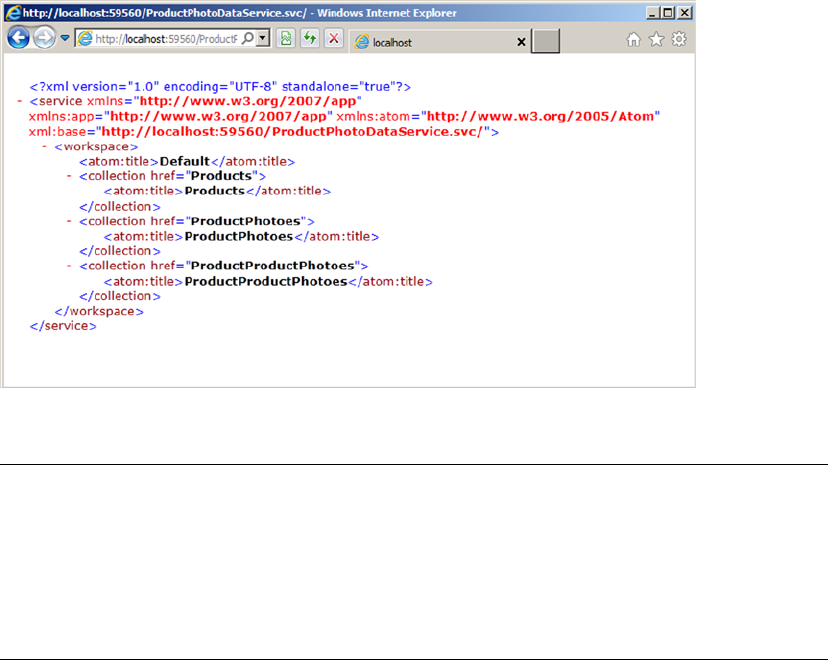

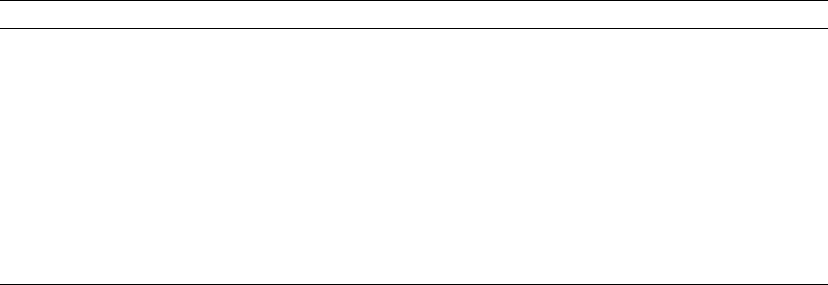

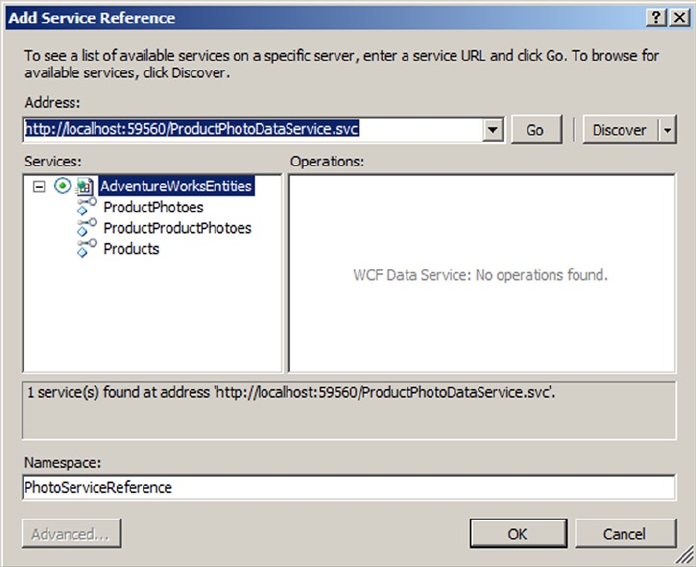

Chapter 17 discusses SQL Server connectivity using middle-tier technologies. Because native HTTP

endpoints have been deprecated since SQL Server 2008, we discuss them as items that may need to be

supported in existing databases but shouldn’t be used for new development. We focus instead on possible

replacement technologies, such as ADO.NET data services and IIS/.NET web services.

Chapter 18

Chapter 18 discusses improvements to server-side error handling made possible with the TRY...CATCH

block. We also discuss various methods for debugging code, including using the Visual Studio T-SQL

debugger. is chapter wraps up with a discussion of dynamic SQL and SQL injection, including the causes

of SQL injection and methods you can use to protect your code against this type of attack.

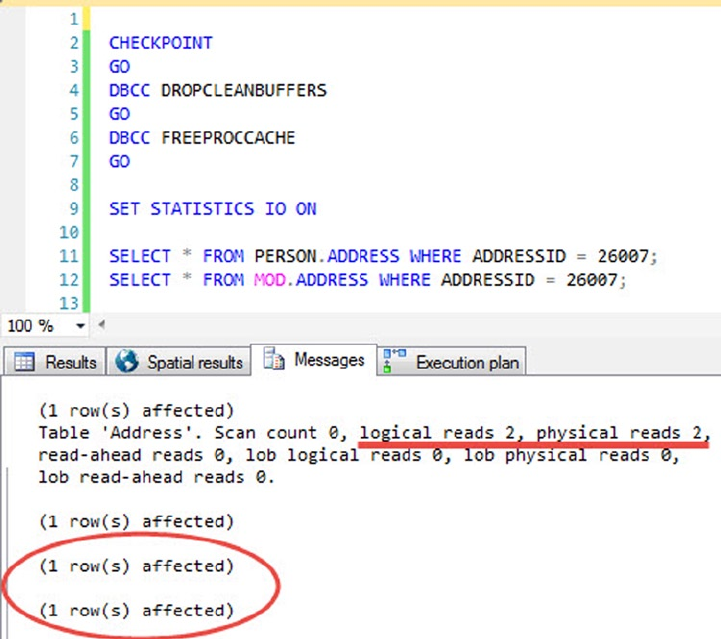

Chapter 19

Chapter 19 provides an overview of performance-tuning SQL Server code. is chapter discusses SQL

Server storage, indexing mechanisms, and query plans. We end the chapter with a discussion of a proven

methodology for troubleshooting T-SQL performance issues.

■ INTRODUCTION

xxxiii

Appendix A

Appendix A provides the answers to the exercise questions included at the end of each chapter.

Appendix B

Appendix B is designed as a quick reference to the XQuery Data Model (XDM) type system.

Appendix C

Appendix C provides a quick reference glossary to several terms, many of which may be new to those using

SQL Server for the rst time.

Appendix D

Appendix D is a quick reference to the SQLCMD command-line tool, which allows you to execute

ad hoc T-SQL statements and batches interactively, or run script les.

Conventions

To help make reading this book a more enjoyable experience, and to help you get as much out of it as

possible, we’ve used the following standardized formatting conventions throughout.

C# code is shown in code font. Note that C# code is case sensitive. Here’s an example:

while (i < 10)

T-SQL source code is also shown in code font, with keywords capitalized. Note that we’ve lowercased

the data types in the T-SQL code to help improve readability. Here’s an example:

DECLARE @x xml;

XML code is shown in code font with attribute and element content in bold for readability.

Some code samples and results have been reformatted in the book for easier reading. XML ignores

whitespace, so the signicant content of the XML has not been altered. Here’s an example:

<book publisher = "Apress">Pro SQL Server 2014 XML</book>:

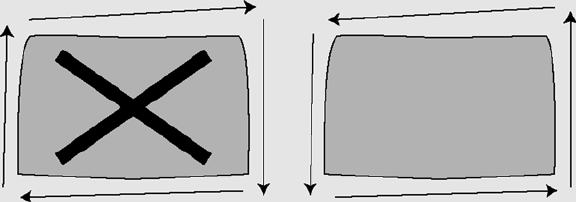

Note ■ Notes, tips, and warnings are displayed like this, in a special font with solid bars placed

over and under the content.

Sidebars include additional information relevant to the current discussion and other interesting facts.

Sidebars are shown on a gray background.

SIDEBARS

■ INTRODUCTION

xxxiv

Prerequisites

is book requires an installation of SQL Server 2014 to run the T-SQL sample code provided. Note that the

code in this book has been specically designed to take advantage of SQL Server 2014 features, and some

of the code samples won’t run on prior versions of SQL Server. e code samples presented in the book

are designed to be run against the AdventureWorks 2014 and SQL Server 2014 In-Memory OLTP sample

databases, available from the CodePlex web site at www.codeplex.com/MSFTDBProdSamples.

e database name used in the samples is not AdventureWorks2014, but AdventureWorks or 2014

In-Memory, for the sake of simplicity.

If you’re interested in compiling and deploying the .NET code samples (the client code and SQL

CLR examples) presented in the book, we highly recommend an installation of Visual Studio 2010 or a

later version. Although you can compile and deploy .NET code from the command line, we’ve provided

instructions for doing so through the Visual Studio Integrated Development Environment (IDE).

We nd that the IDE provides a much more enjoyable experience.

Some examples, such as the ADO.NET Data Services examples in Chapter 16, require an installation

of Internet Information Server(IIS) as well. Other code samples presented in the book may have specic

requirements, such as the Entity Framework 4 samples, which require the .NET Framework 3.5. We’ve added

notes to code samples that have additional requirements like these.

Apress Web Site

Visit this book’s apress.com web page at www.apress.com/9781484201466 for the complete sample code

download for this book. It’s compressed in a zip le and structured so that each subdirectory contains all the

sample code for its corresponding chapter.

We and the Apress team have made every eort to ensure that this book is free from errors and defects.

Unfortunately, thex occasional error does slip past us, despite our best eorts. In the event that you nd an

error in the book, please let us know! You can submit errors to Apress by visiting

www.apress.com/9781484201466 and lling out the form on the Errata tab.

1

Chapter 1

Foundations of T-SQL

SQL Server 2014 is the latest release of Microsoft’s enterprise-class database management system (DBMS). As

the name implies, a DBMS is a tool designed to manage, secure, and provide access to data stored in structured

collections in databases. Transact-SQL (T-SQL) is the language that SQL Server speaks. T-SQL provides query and

data-manipulation functionality, data definition and management capabilities, and security administration tools

to SQL Server developers and administrators. To communicate effectively with SQL Server, you must have a solid

understanding of the language. In this chapter, you begin exploring T-SQL on SQL Server 2014.

A Short History of T-SQL

The history of Structured Query Language (SQL), and its direct descendant Transact-SQL (T-SQL), begins

with a man. Specifically, it all began in 1970 when Dr. E. F. Codd published his influential paper “A Relational

Model of Data for Large Shared Data Banks” in the Communications of the Association for Computing

Machinery (ACM). In his seminal paper, Dr. Codd introduced the definitive standard for relational databases.

IBM went on to create the first relational database management system, known as System R. It subsequently

introduced the Structured English Query Language (SEQUEL, as it was known at the time) to interact with

this early database to store, modify, and retrieve data. The name of this early query language was later

changed from SEQUEL to the now-common SQL due to a trademark issue.

Fast-forward to 1986, when the American National Standards Institute (ANSI) officially approved the

first SQL standard, commonly known as the ANSI SQL-86 standard. The original versions of Microsoft SQL

Server shared a common code base with the Sybase SQL Server product. This changed with the release

of SQL Server 7.0, when Microsoft partially rewrote the code base. Microsoft has since introduced several

iterations of SQL Server, including SQL Server 2000, SQL Server 2005, SQL Server 2008, SQL 2008 R2,

SQL 2012, and now SQL Server 2014. This book focuses on SQL Server 2014, which further extends the

capabilities of T-SQL beyond what was possible in previous releases.

Imperative vs. Declarative Languages

SQL is different from many common programming languages such as C# and Visual Basic because it’s a

declarative language. In contrast, languages such as C++, Visual Basic, C#, and even assembler language are

imperative languages. The imperative language model requires the user to determine what the end result

should be and tell the computer step by step how to achieve that result. It’s analogous to asking a cab driver to

drive you to the airport and then giving the driver turn-by-turn directions to get there. Declarative languages,

on the other hand, allow you to frame your instructions to the computer in terms of the end result. In this

model, you allow the computer to determine the best route to achieve your objective, analogous to telling the

cab driver to take you to the airport and trusting them to know the best route. The declarative model makes a

lot of sense when you consider that SQL Server is privy to a lot of “inside information.” Just like the cab driver

who knows the shortcuts, traffic conditions, and other factors that affect your trip, SQL Server inherently knows

several methods to optimize your queries and data-manipulation operations.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

2

Consider Listing 1-1, which is a simple C# code snippet that reads in a flat file of names and displays

them on the screen.

Listing 1-1. C# Snippet to Read a Flat File

StreamReader sr = new StreamReader("c:\\Person_Person.txt");

string FirstName = null;

while ((FirstName = sr.ReadLine()) != null) {

Console.WriteLine(s); } sr.Dispose();

The example performs the following functions in an orderly fashion:

1. The code explicitly opens the storage for input (in this example, a flat file is used

as a “database”).

2. It reads in each record (one record per line), explicitly checking for the end of the

file.

3. As it reads the data, the code returns each record for display using

Console.Writeline().

4. Finally, it closes and disposes of the connection to the data file.

Consider what happens when you want to add a name to or delete a name from the flat-file “database.”

In those cases, you must extend the previous example and add custom routines to explicitly reorganize all

the data in the file so that it maintains proper ordering. If you want the names to be listed and retrieved

in alphabetical (or any other) order, you must write your own sort routines as well. Any type of additional

processing on the data requires that you implement separate procedural routines.

The SQL equivalent of the C# code in Listing 1-1 might look something like Listing 1-2.

Listing 1-2. SQL Query to Retrieve Names from a Table

SELECT FirstName FROM Person.Person;

Tip ■ Unless otherwise specified, you can run all the T-SQL samples in this book in the AdventureWorks 2014

or SQL 2014 In-Memory sample database using SQL Server Management Studio or SQLCMD.

To sort your data, you can simply add an ORDER BY clause to the SELECT query in Listing 1-2. With

properly designed and indexed tables, SQL Server can automatically reorganize and index your data for

efficient retrieval after you insert, update, or delete rows.

T-SQL includes extensions that allow you to use procedural syntax. In fact, you could rewrite the

previous example as a cursor to closely mimic the C# sample code. These extensions should be used with

care, however, because trying to force the imperative model on T-SQL effectively overrides SQL Server’s

built-in optimizations. More often than not, this hurts performance and makes simple projects a lot more

complex than they need to be.

One of the great assets of SQL Server is that you can invoke its power, in its native language, from nearly any

other programming language. For example, in .NET you can connect to SQL Server and issue SQL queries and

T-SQL statements to it via the System.Data.SqlClient namespace, which is discussed further in Chapter 16. This

gives you the opportunity to combine SQL’s declarative syntax with the strict control of an imperative language.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

3

SQL Basics

Before you learn about developments in T-SQL, or on any SQL-based platform for that matter, let’s make sure

we’re speaking the same language. Fortunately, SQL can be described accurately using well-defined and time-

tested concepts and terminology. Let’s begin the discussion of the components of SQL by looking at statements.

Statements

To begin with, in SQL you use statements to communicate your requirements to the DBMS. A statement is

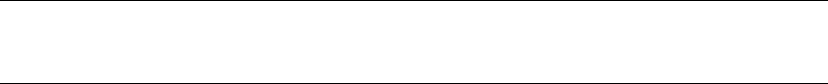

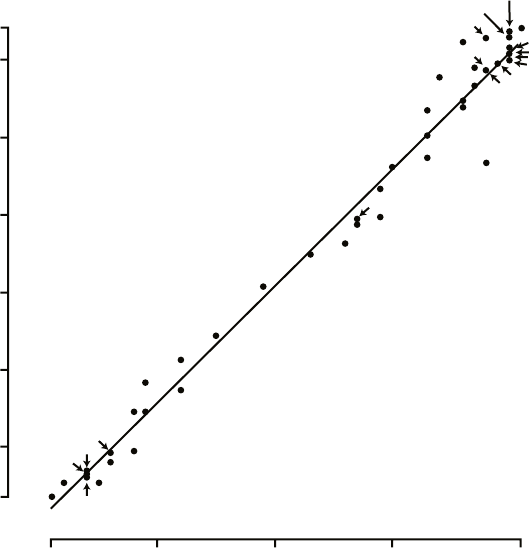

composed of several parts, as shown in Figure 1-1.

Figure 1-1. Components of a SQL statement

As you can see in the figure, SQL statements are composed of one or more clauses, some of which

may be optional depending on the statement. In the SELECT statement shown, there are three clauses: the

SELECT clause, which defines the columns to be returned by the query; the FROM clause, which indicates the

source table for the query; and the WHERE clause, which is used to limit the results. Each clause represents

a primitive operation in the relational algebra. For instance, in the example, the SELECT clause represents

a relational projection operation, the FROM clause indicates the relation, and the WHERE clause performs a

restriction operation.

Note ■ The relational model of databases is the model formulated by Dr. E. F. Codd. In the relational model,

what are known in SQL as tables are referred to as relations; hence the name. Relational calculus and relational

algebra define the basis of query languages for the relational model in mathematical terms.

OrDer OF eXeCUtION

Understanding the logical order in which SQL clauses are applied within a statement or query is

important when setting your expectations about results. Although vendors are free to physically perform

whatever operations, in any order, that they choose to fulfill a query request, the results must be the

same as if the operations were applied in a standards-defined order.

The WHERE clause in the example contains a predicate, which is a logical expression that evaluates to

one of SQL’s three possible logical results: true, false, or unknown. In this case, the WHERE clause and the

predicate limit the results to only rows in which ContactId equals 1.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

4

The SELECT clause includes an expression that is calculated during statement execution. In the example,

the expression EmailPromotion * 10 is used. This expression is calculated for every row of the result set.

SQL three-VaLUeD LOGIC

SQL institutes a logic system that may seem foreign to developers coming from other languages like

C++ or Visual Basic (or most other programming languages, for that matter). Most modern computer

languages use simple two-valued logic: a Boolean result is either true or false. SQL supports the

concept of NULL, which is a placeholder for a missing or unknown value. This results in a more complex

three-valued logic (3VL).

Let’s look at a quick example to demonstrate. If I asked you, “Is x less than 10?” your first response might

be along the lines of, “How much is x ?” If I refused to tell you what value x stood for, you would have no

idea whether x was less than, equal to, or greater than 10; so the answer to the question is neither true

nor false—it’s the third truth value, unknown. Now replace x with NULL, and you have the essence of SQL

3VL. NULL in SQL is just like a variable in an equation when you don’t know the variable’s value.

No matter what type of comparison you perform with a missing value, or which other values you compare

the missing value to, the result is always unknown. The discussion of SQL 3VL continues in Chapter 3.

The core of SQL is defined by statements that perform five major functions: querying data stored in

tables, manipulating data stored in tables, managing the structure of tables, controlling access to tables, and

managing transactions. These subsets of SQL are defined following:

• Querying: The SELECT query statement is complex. It has more optional clauses and

vendor-specific tweaks than any other statement. SELECT is concerned simply with

retrieving data stored in the database.

• Data Manipulation Language (DML): DML is considered a sublanguage of SQL.

It’s concerned with manipulating data stored in the database. DML consists of

four commonly used statements: INSERT, UPDATE, DELETE, and MERGE. DML also

encompasses cursor-related statements. These statements allow you to manipulate

the contents of tables and persist the changes to the database.

• Data Definition Language (DDL): DDL is another sublanguage of SQL. The primary

purpose of DDL is to create, modify, and remove tables and other objects from the

database. DDL consists of variations of the CREATE, ALTER, and DROP statements.

• Data Control Language (DCL): DCL is yet another SQL sublanguage. DCL’s goal is to

allow you to restrict access to tables and database objects. It’s composed of various

GRANT and REVOKE statements that allow or deny users access to database objects.

• Transactional Control Language (TCL): TCL is the SQL sublanguage that is

concerned with initiating and committing or rolling back transactions. A transaction

is basically an atomic unit of work performed by the server. TCL comprises the BEGIN

TRANSACTION, COMMIT, and ROLLBACK statements.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

5

Databases

A SQL Server instance—an individual installation of SQL Server with its own ports, logins, and databases—

can manage multiple system databases and user databases. SQL Server has five system databases, as follows:

• resource: The resource database is a read-only system database that contains all

system objects. You don’t see the resource database in the SQL Server Management

Studio (SSMS) Object Explorer window, but the system objects persisted in the

resource database logically appear in every database on the server.

• master: The master database is a server-wide repository for configuration and status

information. It maintains instance-wide metadata about SQL Server as well as

information about all databases installed on the current instance. It’s wise to avoid

modifying or even accessing the master database directly in most cases. An entire

server can be brought to its knees if the master database is corrupted. If you need to

access the server configuration and status information, use catalog views instead.

• model: The model database is used as the template from which newly created

databases are essentially cloned. Normally, you won’t want to change this database

in production settings unless you have a very specific purpose in mind and are

extremely knowledgeable about the potential implications of changing the model

database.

• msdb: The msdb database stores system settings and configuration information for

various support services, such as SQL Agent and Database Mail. Normally, you use

the supplied stored procedures and views to modify and access this data, rather than

modifying it directly.

• tempdb: The tempdb database is the main working area for SQL Server. When SQL

Server needs to store intermediate results of queries, for instance, they’re written to

tempdb. Also, when you create temporary tables, they’re actually created in tempdb.

The tempdb database is reconstructed from scratch every time you restart SQL Server.

Microsoft recommends that you use the system-provided stored procedures and catalog views to

modify system objects and system metadata, and let SQL Server manage the system databases. You should

avoid modifying the contents and structure of the system databases directly through ad hoc T-SQL. Only

modify the system objects and metadata by executing the system stored procedures and functions.

User databases are created by database administrators (DBAs) and developers on the server. These

types of databases are so called because they contain user data. The AdventureWorks2014 sample database

is one example of a user database.

Transaction Logs

Every SQL Server database has its own associated transaction log. The transaction log provides recoverability

in the event of failure and ensures the atomicity of transactions. The transaction log accumulates all changes

to the database so that database integrity can be maintained in the event of an error or other problem.

Because of this arrangement, all SQL Server databases consist of at least two files: a database file with an

.mdf extension and a transaction log with an .ldf extension.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

6

the aDVeNtUreWOrKS2014 CID teSt

SQL folks, and IT professionals in general, love their acronyms. A common acronym in the SQL world

is ACID, which stands for “atomicity, consistency, isolation, durability.” These four words form a set of

properties that database systems should implement to guarantee reliability of data storage, processing,

and manipulation:

• Atomicity : All data changes should be transactional in nature. That is, data changes

should follow an all-or-nothing pattern. The classic example is a double-entry

bookkeeping system in which every debit has an associated credit. Recording a

debit-and-credit double entry in the database is considered one transaction, or a single

unit of work. You can’t record a debit without recording its associated credit, and vice

versa. Atomicity ensures that either the entire transaction is performed or none of it is.

• Consistency : Only data that is consistent with the rules set up in the database is stored.

Data types and constraints can help enforce consistency in the database. For instance,

you can’t insert the name Meghan in an integer column. Consistency also applies

when dealing with data updates. If two users update the same row of a table at the

same time, an inconsistency could occur if one update is only partially complete when

the second update begins. The concept of isolation, described in the following bullet

point, is designed to deal with this situation.

• Isolation: Multiple simultaneous updates to the same data should not interfere with one

another. SQL Server includes several locking mechanisms and isolation levels to ensure

that two users can’t modify the exact same data at the exact same time, which could

put the data in an inconsistent state. Isolation also prevents you from even reading

uncommitted data by default.

• Durability : Data that passes all the previous tests is committed to the database.

The concept of durability ensures that committed data isn’t lost. The transaction

log and data backup and recovery features help to ensure durability.

The transaction log is one of the main tools SQL Server uses to enforce the ACID concept when storing

and manipulating data.

Schemas

SQL Server 2014 supports database schemas, which are logical groupings by the owner of database objects.

The AdventureWorks2014 sample database, for instance, contains several schemas, such as HumanResources,

Person, and Production. These schemas are used to group tables, stored procedures, views, and user-

defined functions (UDFs) for management and security purposes.

Tip ■ When you create new database objects, like tables, and don’t specify a schema, they’re automatically

created in the default schema. The default schema is normally dbo, but DBAs may assign different default

schemas to different users. Because of this, it’s always best to specify the schema name explicitly when

creating database objects.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

7

Tables

SQL Server supports several types of objects that can be created in a database. SQL stores and manages

data in its primary data structures: tables. A table consists of rows and columns, with data stored at the

intersections of these rows and columns. As an example, the AdventureWorks HumanResources.Department

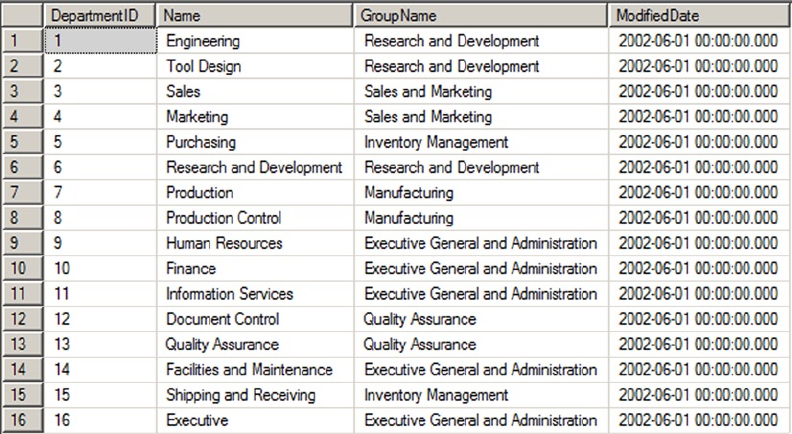

table is shown in Figure 1-2. In SQL Server 2014, you now have the option of creating a table In-Memory. This

feature allows all the table data to be stored in memory and can be accessed with extremely low latency.

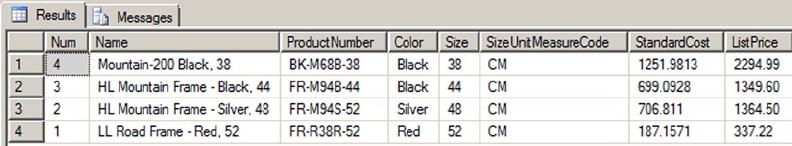

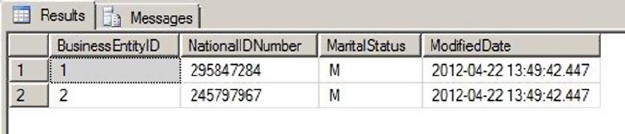

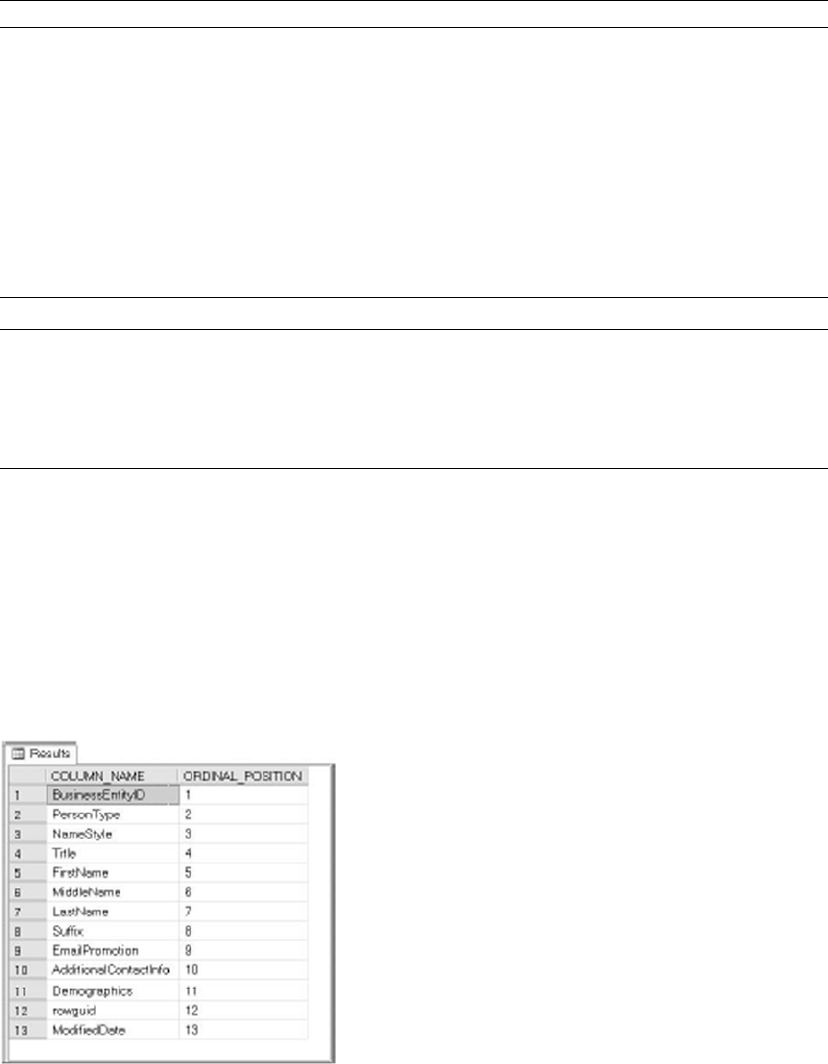

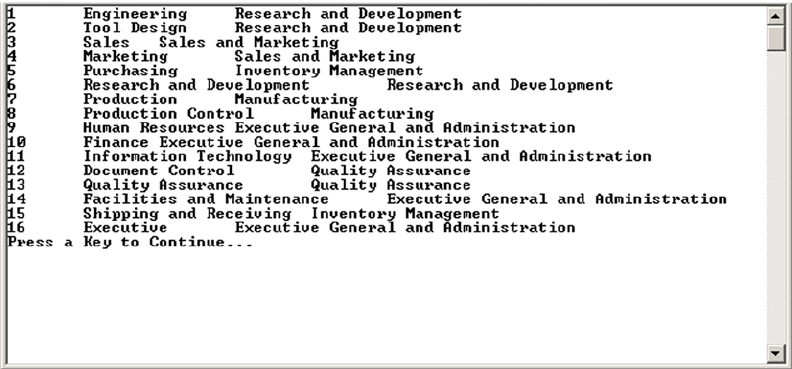

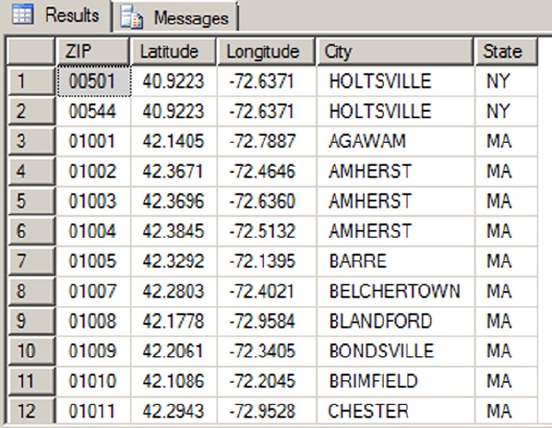

Figure 1-2. HumanResources.Department table

In the table, each row is associated with columns and each column has certain restrictions placed on

its content. These restrictions form the data domain. The data domain defines all the values a column can

contain. At the lowest level, the data domain is based on the data type of the column. For instance, a smallint

column can contain any integer values between -32,768 and +32,767.

The data domain of a column can be further constrained through the use of check constraints, triggers,

and foreign key constraints. Check constraints provide a means of automatically checking that the value of a

column is within a certain range or equal to a certain value whenever a row is inserted or updated. Triggers

can provide functionality similar to that of check constraints. Foreign key constraints allow you to declare a

relationship between the columns of one table and the columns of another table. You can use foreign key

constraints to restrict the data domain of a column to include only those values that appear in a designated

column of another table.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

8

reStrICtING the Data DOMaIN: a COMparISON

This section has given a brief overview of three methods of constraining the data domain for a column.

Each method restricts the values that can be contained in the column. Here’s a quick comparison of the

three methods:

Foreign key constraints allow SQL Server to perform an automatic check against •

another table to ensure that the values in a given column exist in the referenced table. If

the value you’re trying to update or insert in a table doesn’t exist in the referenced table,

an error is raised and any changes are rolled back. The foreign key constraint provides a

flexible means of altering the data domain, because adding values to or removing them

from the referenced table automatically changes the data domain for the referencing

table. Also, foreign key constraints offer an additional feature known as cascading

declarative referential integrity (DRI), which automatically updates or deletes rows from

a referencing table if an associated row is removed from the referenced table.

Check constraints provide a simple, efficient, and effective tool for ensuring that the •

values being inserted or updated in a column(s) are within a given range or a member

of a given set of values. Check constraints, however, aren’t as flexible as foreign key

constraints and triggers because the data domain is normally defined using hard-coded

constant values or logical expressions.

Triggers are stored procedures attached to insert, update, or delete events on a table or •

view. Triggers can be set on DML or DDL events. Both DML and DDL triggers provide a

flexible solution for constraining data, but they may require more maintenance than the

other options because they’re essentially a specialized form of stored procedure. Unless

they’re extremely well designed, triggers have the potential to be much less efficient

than other methods of constraining data. Generally triggers are avoided in modern

databases in favor of more efficient methods of constraining data. The exception to this

is when you’re trying to enforce a foreign key constraint across databases, because

SQL Server doesn’t support cross-database foreign key constraints.

Which method you use to constrain the data domain of your column(s) needs to be determined by your

project-specific requirements on a case-by-case basis.

Views

A view is like a virtual table—the data it exposes isn’t stored in the view object itself. Views are composed of

SQL queries that reference tables and other views, but they’re referenced just like tables in queries. Views

serve two major purposes in SQL Server: they can be used to hide the complexity of queries, and they can be

used as a security device to limit the rows and columns of a table that a user can query. Views are expanded,

meaning their logic is incorporated into the execution plan for queries when you use them in queries and

DML statements. SQL Server may not be able to use indexes on the base tables when the view is expanded,

resulting in less-than-optimal performance when querying views in some situations.

To overcome the query performance issues with views, SQL Server also has the ability to create a special

type of view known as an indexed view. An indexed view is a view that SQL Server persists to the database

like a table. When you create an indexed view, SQL Server allocates storage for it and allows you to query

it like any other table. There are, however, restrictions on inserting into, updating, and deleting from an

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

9

indexed view. For instance, you can’t perform data modifications on an indexed view if more than one of the

view’s base tables will be affected. You also can’t perform data modifications on an indexed view if the view

contains aggregate functions or a DISTINCT clause.

You can also create indexes on an indexed view to improve query performance. The downside to an

indexed view is increased overhead when you modify data in the view’s base tables, because the view must

be updated as well.

Indexes

Indexes are SQL Server’s mechanisms for optimizing access to data. SQL Server 2014 supports several types

of indexes, including the following:

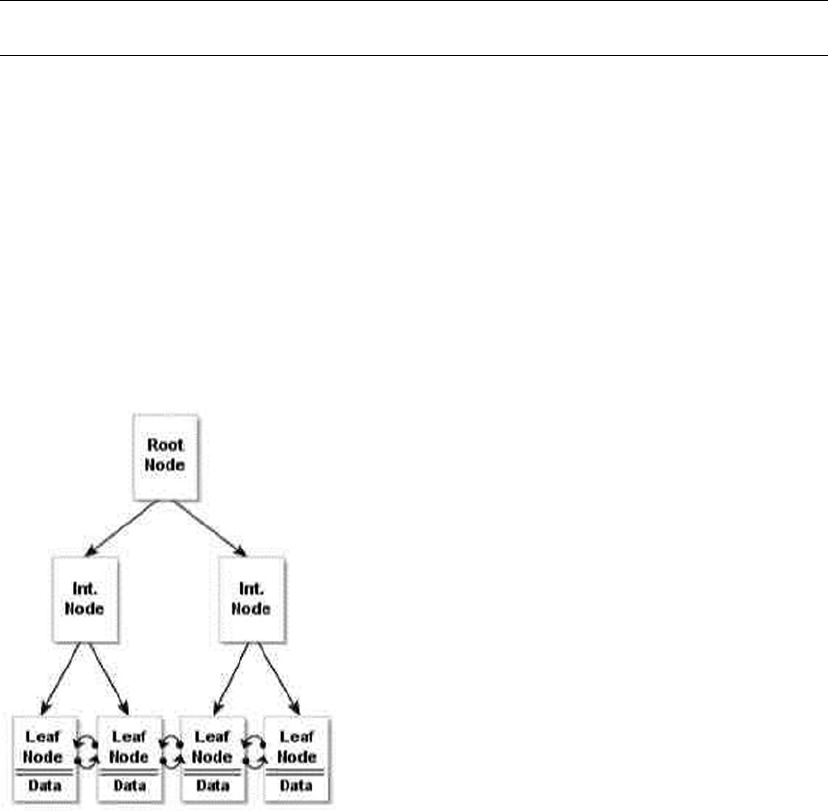

• Clustered index: A clustered index is limited to one per table. This type of index

defines the ordering of the rows in the table. A clustered index is physically

implemented using a b-tree structure with the data stored in the leaf levels of the

tree. Clustered indexes order the data in a table in much the same way that a phone

book is ordered by last name. A table with a clustered index is referred to as a

clustered table, whereas a table with no clustered index is referred to as a heap.

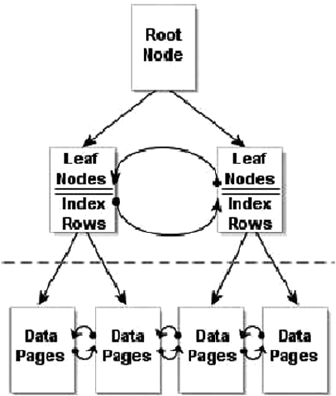

• Nonclustered index: A nonclustered index is also a b-tree index managed by SQL

Server. In a nonclustered index, index rows are included in the leaf levels of the b-tree.

Because of this, nonclustered indexes have no effect on the ordering of rows in a table.

The index rows in the leaf levels of a nonclustered index consist of the following:

A nonclustered key value•

A row locator, which is the clustered index key on a table with a clustered index, •

or a SQL-generated row ID for a heap

Nonkey columns, which are added via the • INCLUDE clause of the CREATE INDEX

statement

• Columnstore index: A columnstore index is a special index used for very large tables

(>100 million rows) and is mostly applicable to large data-warehouse

implementations. A columnstore index creates an index on the column as opposed

to the row and allows for efficient and extremely fast retrieval of large data sets. Prior

to SQL Server 2014, tables with columnstore indexes were required to be read-

only. In SQL Server 2014, columnstore indexes are now updateable. This feature is

discussed further in Chapter 6.

• XML index: SQL Server supports special indexes designed to help efficiently query

XML data. See Chapter 11 for more information.

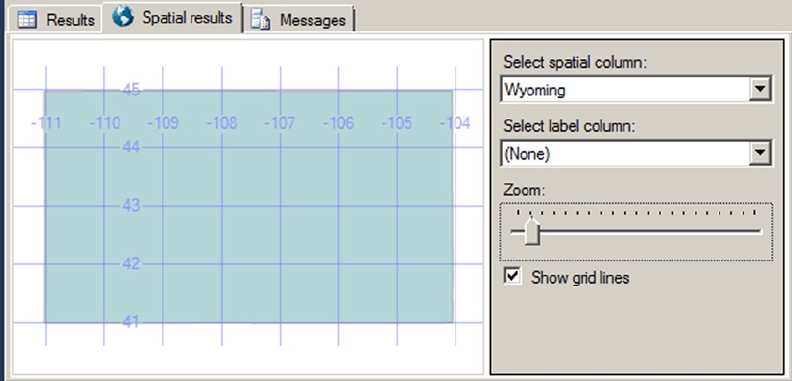

• Spatial index: A spatial index is an interesting new indexing structure to support

efficient querying of the new geometry and geography data types. See Chapter 2 for

more information.

• Full-text index: A full-text index (FTI) is a special index designed to efficiently

perform full-text searches of data and documents.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

10

• Memory-optimized index: SQL Server 2014 introduced In-Memory tables that bring with

them new index types. These types of indexes only exist in memory and must be created

with the initial table creation. These index types are covered at length in Chapter 6:

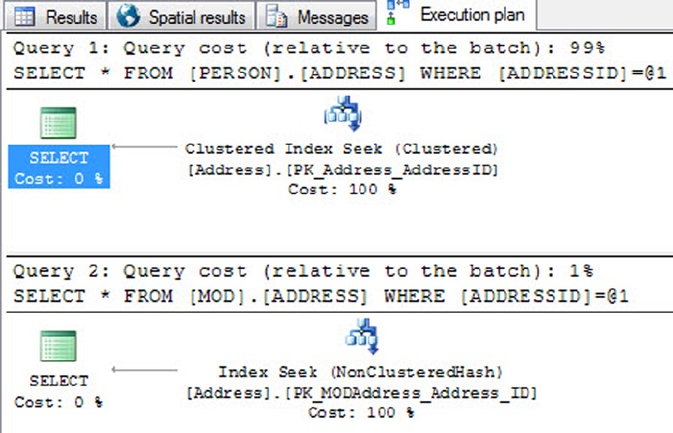

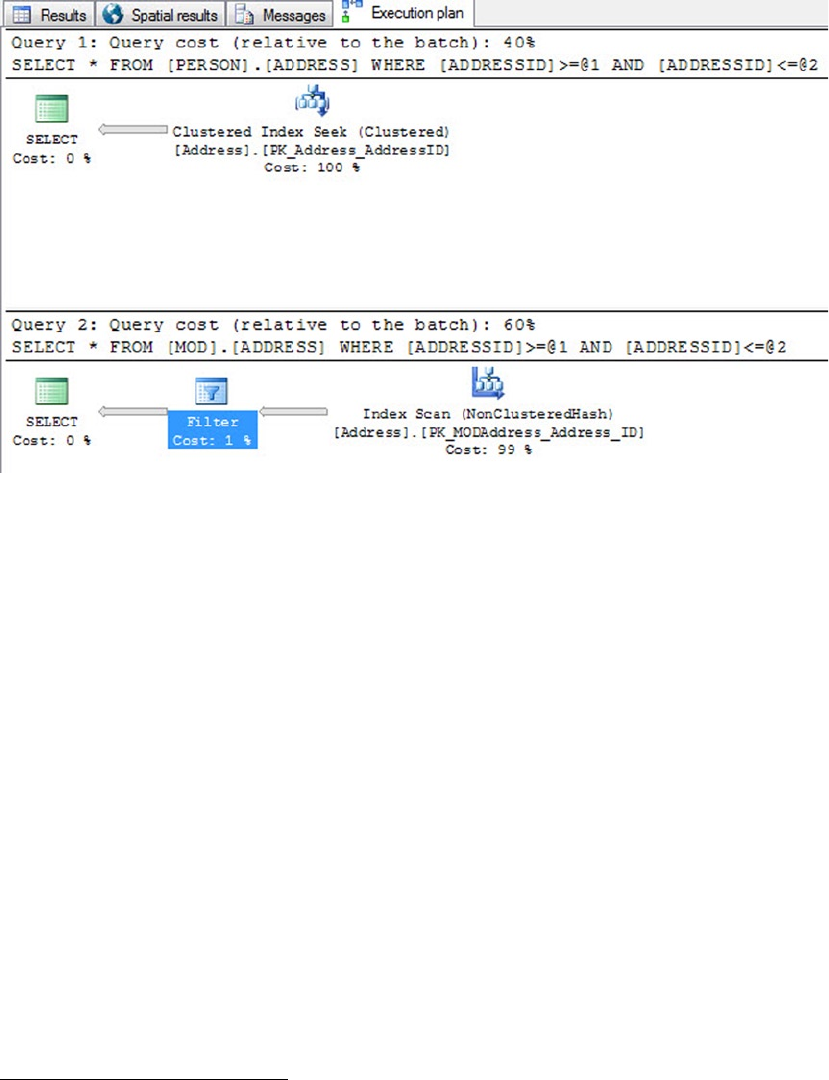

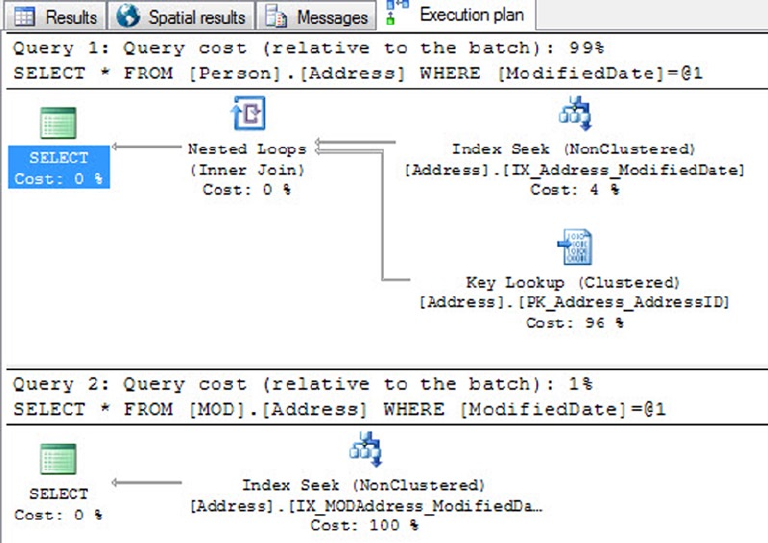

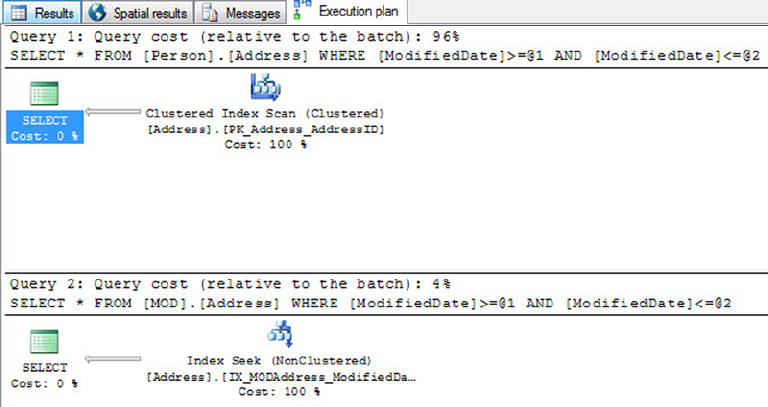

• Nonclustered hash index: This type of index is most efficient in scenarios where

the query will return values for a specific value criteria. For example, SELECT *

FROM <Table> WHERE <Column> = @<ColumnValue>.

• Memory-optimized nonclustered index: This type of index supports the same

functions as a hash index, in addition to seek operations and sort ordering.

You can also include nonkey columns in your nonclustered indexes with the INCLUDE clause of the CREATE

INDEX statement. The included columns give you the ability to work around SQL Server’s index size limitations.

Stored Procedures

SQL Server supports the installation of server-side T-SQL code modules via stored procedures (SPs). It’s very

common to use SPs as a sort of intermediate layer or custom server-side application programming interface

(API) that sits between user applications and tables in the database. Stored procedures that are specifically

designed to perform queries and DML statements against the tables in a database are commonly referred to

as CRUD (create, read, update, delete) procedures.

User-Defined Functions

User-defined functions (UDFs) can perform queries and calculations, and return either scalar values or

tabular result sets. UDFs have certain restrictions placed on them. For instance, they can’t use certain

nondeterministic system functions, nor can they perform DML or DDL statements, so they can’t make

modifications to the database structure or content. They can’t perform dynamic SQL queries or change the

state of the database (cause side effects).

SQL CLR Assemblies

SQL Server 2014 supports access to Microsoft .NET functionality via the SQL Common Language Runtime

(SQL CLR). To access this functionality, you must register compiled .NET SQL CLR assemblies with the

server. The assembly exposes its functionality through class methods, which can be accessed via SQL CLR

functions, procedures, triggers, user-defined types, and user-defined aggregates. SQL CLR assemblies

replace the deprecated SQL Server extended stored procedure (XP) functionality available in prior releases.

Tip ■ Avoid using extended stored procedures (XPs) on SQL Server 2014. The same functionality provided

by XPs can be provided by SQL CLR code. The SQL CLR model is more robust and secure than the XP model.

Also keep in mind that the XP library is deprecated, and XP functionality may be completely removed in a future

version of SQL Server.

Elements of Style

Now that you’ve had a broad overview of the basics of SQL Server, let’s look at some recommended

development tips to help with code maintenance. Selecting a particular style and using it consistently helps

immensely with both debugging and future maintenance. The following sections contain some general

recommendations to make your T-SQL code easy to read, debug, and maintain.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

11

Whitespace

SQL Server ignores extra whitespace between keywords and identifiers in SQL queries and statements. A

single statement or query may include extra spaces and tab characters and can even extend across several

lines. You can use this knowledge to great advantage. Consider Listing 1-3, which is adapted from the

HumanResources.vEmployee view in the AdventureWorks2014 database.

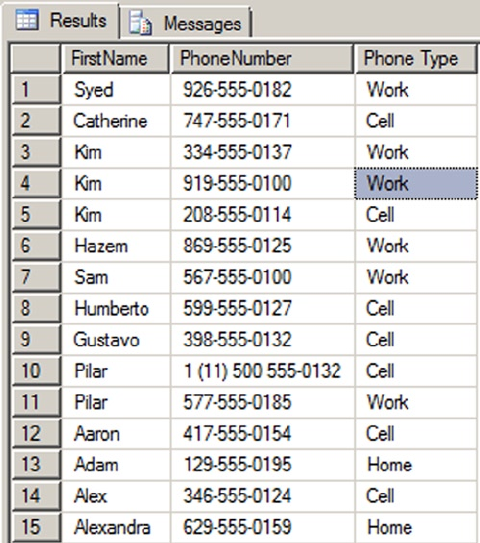

Listing 1-3. The HumanResources.vEmployee View from the AdventureWorks2014 Database

SELECT e.BusinessEntityID, p.Title, p.FirstName, p.MiddleName, p.LastName, p.Suffix,

e.JobTitle, pp.PhoneNumber, pnt.Name AS PhoneNumberType, ea.EmailAddress,

p.EmailPromotion, a.AddressLine1, a.AddressLine2, a.City, sp.Name AS StateProvinceName,

a.PostalCode, cr.Name AS CountryRegionName, p.AdditionalContactInfo

FROM HumanResources.Employee AS e INNER JOIN Person.Person AS p ON p.BusinessEntityID =

e.BusinessEntityID INNER JOIN Person.BusinessEntityAddress AS bea ON bea.BusinessEntityID

= e.BusinessEntityID INNER JOIN Person.Address AS a ON a.AddressID = bea.AddressID INNER

JOIN Person.StateProvince AS sp ON sp.StateProvinceID = a.StateProvinceID INNER JOIN Person.

CountryRegion AS cr ON cr.CountryRegionCode = sp.CountryRegionCode LEFT OUTER JOIN Person.

PersonPhone AS pp ON pp.BusinessEntityID = p.BusinessEntityID LEFT OUTER JOIN Person.

PhoneNumberType AS pnt ON pp.PhoneNumberTypeID = pnt.PhoneNumberTypeID LEFT OUTER JOIN

Person.EmailAddress AS ea ON p.BusinessEntityID = ea.BusinessEntityID

This query will run and return the correct result, but it’s very hard to read. You can use whitespace and

table aliases to generate a version that is much easier on the eyes, as demonstrated in Listing 1-4.

Listing 1-4. The HumanResources.vEmployee View Reformatted for Readability

SELECT

e.BusinessEntityID,

p.Title,

p.FirstName,

p.MiddleName,

p.LastName,

p.Suffix,

e.JobTitle,

pp.PhoneNumber,

pnt.Name AS PhoneNumberType,

ea.EmailAddress,

p.EmailPromotion,

a.AddressLine1,

a.AddressLine2,

a.City,

sp.Name AS StateProvinceName,

a.PostalCode,

cr.Name AS CountryRegionName,

p.AdditionalContactInfo

FROM HumanResources.Employee AS e INNER JOIN Person.Person AS p

ON p.BusinessEntityID = e.BusinessEntityID

INNER JOIN Person.BusinessEntityAddress AS bea

ON bea.BusinessEntityID = e.BusinessEntityID

INNER JOIN Person.Address AS a

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

12

ON a.AddressID = bea.AddressID

INNER JOIN Person.StateProvince AS sp

ON sp.StateProvinceID = a.StateProvinceID

INNER JOIN Person.CountryRegion AS cr

ON cr.CountryRegionCode = sp.CountryRegionCode

LEFT OUTER JOIN Person.PersonPhone AS pp

ON pp.BusinessEntityID = p.BusinessEntityID

LEFT OUTER JOIN Person.PhoneNumberType AS pnt

ON pp.PhoneNumberTypeID = pnt.PhoneNumberTypeID

LEFT OUTER JOIN Person.EmailAddress AS ea

ON p.BusinessEntityID = ea.BusinessEntityID;

Notice that the ON keywords are indented, associating them visually with the INNER JOIN operators

directly before them in the listing. The column names on the lines directly after the SELECT keyword are also

indented, associating them visually with SELECT. This particular style is useful in helping visually break up a

query into sections. The personal style you decide on may differ from this one, but once you’ve decided on a

standard indentation style, be sure to apply it consistently throughout your code.

Code that is easy to read is easier to debug and maintain. The code in Listing 1-4 uses table aliases, plenty

of whitespace, and the semicolon (;) terminator to mark the end of SELECT statements, to make the code more

readable. (It’s a good idea to get into the habit of using the terminating semicolon in your SQL queries—it’s

required in some instances.)

Tip ■ Semicolons are required terminators for some statements in SQL Server 2014. Instead of trying to

remember all the special cases where they are or aren’t required, it’s a good idea to use the semicolon

statement terminator throughout your T-SQL code. You’ll notice the use of semicolon terminators in all the

examples in this book.

Naming Conventions

SQL Server allows you to name your database objects (tables, views, procedures, and so on) using just about

any combination of up to 128 characters (116 characters for local temporary table names), as long as you

enclose them in single quotes ('') or brackets ([ ]). Just because you can, however, doesn’t necessarily

mean you should. Many of the allowed characters are hard to differentiate from other similar-looking

characters, and some may not port well to other platforms. The following suggestions will help you avoid

potential problems:

Use alphabetic characters (A–Z, a–z, and Unicode Standard 3.2 letters) for the first •

character of your identifiers. The obvious exceptions are SQL Server variable names

that start with the at (@) sign, temporary tables and procedures that start with the

number sign (#), and global temporary tables and procedures that begin with a

double number sign (##).

Many built-in T-SQL functions and system variables have names that begin with •

a double at sign (@@), such as @@ERR0R and @@IDENTITY. To avoid confusion and

possible conflicts, don’t use a leading double at sign to name your identifiers.

Restrict the remaining characters in your identifiers to alphabetic characters (A–Z, •

a–z, and Unicode Standard 3.2 letters), numeric digits (0–9), and the underscore

character (_). The dollar sign ($) character, although allowed, isn’t advisable.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

13

Avoid embedded spaces, punctuation marks (other than the underscore character), •

and other special characters in your identifiers.

Avoid using SQL Server 2014 reserved keywords as identifiers. You can find the list •

here: http://msdn.microsoft.com/en-us/library/ms189822.aspx.

Limit the length of your identifiers. Thirty-two characters or less is a reasonable limit •

while not being overly restrictive. Much more than that becomes cumbersome to

type and can hurt your code readability.

Finally, to make your code more readable, select a capitalization style for your identifiers and code, and

use it consistently. My preference is to fully capitalize T-SQL keywords and use mixed-case and underscore

characters to visually break up identifiers into easily readable words. Using all capital characters or

inconsistently applying mixed case to code and identifiers can make your code illegible and hard to maintain.

Consider the example query in Listing 1-5.

Listing 1-5. All-Capital SELECT Query

SELECT P.BUSINESSENTITYID, P.FIRSTNAME, P.LASTNAME, S.SALESYTD

FROM PERSON.PERSON P INNER JOIN SALES.SALESPERSON SP

ON P.BUSINESSENTITYID = SP.BUSINESSENTITYID;

The all-capital version is difficult to read. It’s hard to tell the SQL keywords from the column and

table names at a glance. Compound words for column and table names aren’t easily identified. Basically,

your eyes have to work a lot harder to read this query than they should, which makes otherwise simple

maintenance tasks more difficult. Reformatting the code and identifiers makes this query much easier on the

eyes, as Listing 1-6 demonstrates.

Listing 1-6. Reformatted, Easy-on-the-Eyes Query

SELECT

p.BusinessEntityID,

p.FirstName,

p.LastName,

sp.SalesYTD

FROM Person.Person p INNER JOIN Sales.SalesPerson sp

ON p.BusinessEntityID = sp.BusinessEntityID;

The use of all capitals for the keywords in the second version makes them stand out from the mixed-

case table and column names. Likewise, the mixed-case column and table names make the compound word

names easy to recognize. The net effect is that the code is easier to read, which makes it easier to debug and

maintain. Consistent use of good formatting habits helps keep trivial changes trivial and makes complex

changes easier.

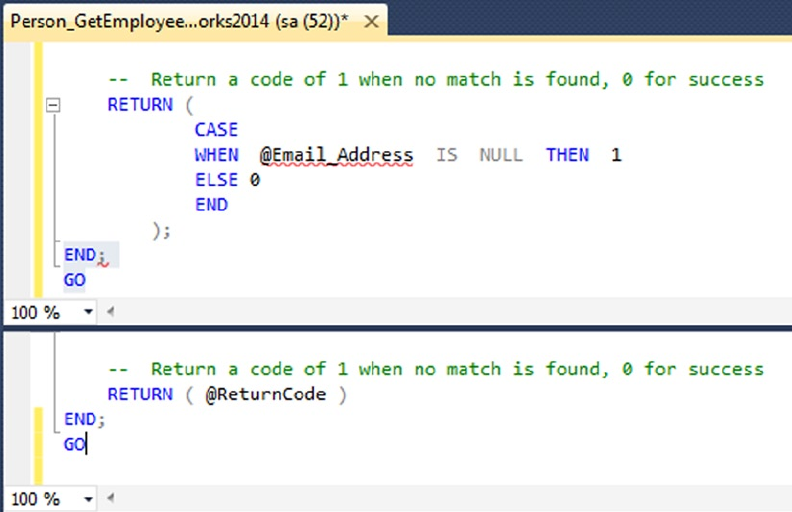

One Entry, One Exit

When writing SPs and UDFs, it’s good programming practice to use the “one entry, one exit” rule. SPs and

UDFs should have a single entry point and a single exit point (RETURN statement).

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

14

The SP in Listing 1-7 is a simple procedure with one entry point and several exit points. It retrieves the

ContactTypelD number from the AdventureWorks2014 Person.ContactType table for the ContactType

name passed into it. If no ContactType exists with the name passed in, a new one is created, and the newly

created ContactTypelD is passed back.

Listing 1-7. Stored Procedure Example with One Entry and Multiple Exits

CREATE PROCEDURE dbo.GetOrAdd_ContactType

(

@Name NVARCHAR(50),

@ContactTypeID INT OUTPUT

)

AS

DECLARE @Err_Code AS INT;

SELECT @Err_Code = 0;

SELECT @ContactTypeID = ContactTypeID

FROM Person.ContactType

WHERE [Name] = @Name;

IF @ContactTypeID IS NOT NULL

RETURN; -- Exit 1: if the ContactType exists

INSERT

INTO Person.ContactType ([Name], ModifiedDate)

SELECT @Name, CURRENT_TIMESTAMP;

SELECT @Err_Code = 'error';

IF @Err_Code <> 0

RETURN @Err_Code; -- Exit 2: if there is an error on INSERT

SELECT @ContactTypeID = SCOPE_IDENTITY();

RETURN @Err_Code; -- Exit 3: after successful INSERT

GO

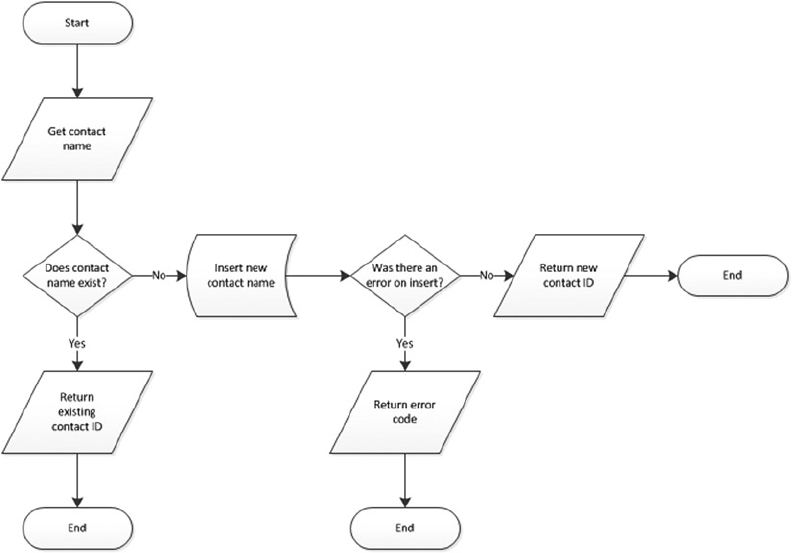

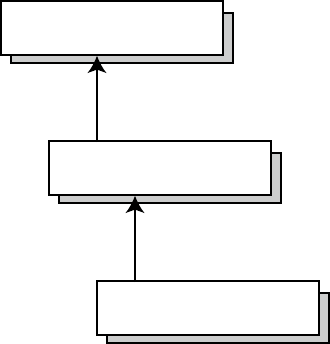

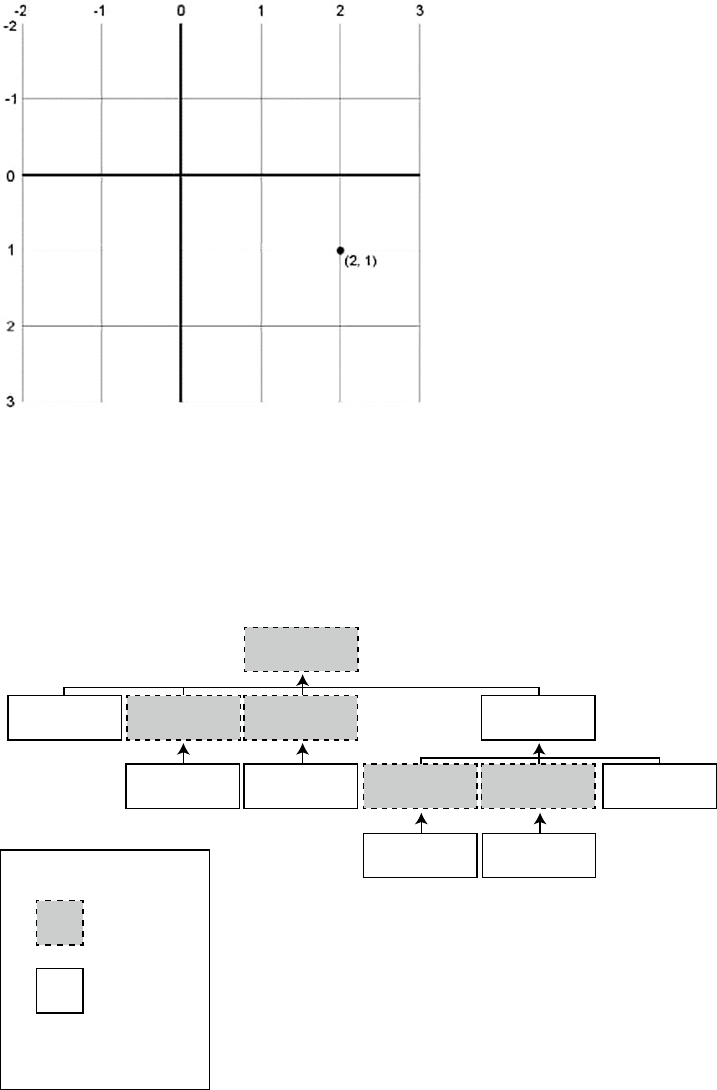

This code has one entry point but three possible exit points. Figure 1-3 shows a simple flowchart for the

paths this code can take.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

15

As you can imagine, maintaining code such as that in Listing 1-7 becomes more difficult because the

flow of the code has so many possible exit points, each of which must be accounted for when you make

modifications to the SP. Listing 1-8 updates Listing 1-7 to give it a single entry point and a single exit point,

making the logic easier to follow.

Listing 1-8. Stored Procedure with One Entry and One Exit

CREATE PROCEDURE dbo.GetOrAdd_ContactType

(

@Name NVARCHAR(50),

@ContactTypeID INT OUTPUT

)

AS

DECLARE @Err_Code AS INT;

SELECT @Err_Code = 0;

SELECT @ContactTypeID = ContactTypeID

FROM Person.ContactType

WHERE [Name] = @Name;

IF @ContactTypeID IS NULL

BEGIN

Figure 1-3. Flowchart for an example with one entry and multiple exits

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

16

INSERT

INTO Person.ContactType ([Name], ModifiedDate)

SELECT @Name, CURRENT_TIMESTAMP;

SELECT @Err_Code = @@error;

IF @Err_Code = 0 -- If there's an error, skip next

SELECT @ContactTypeID = SCOPE_IDENTITY();

END

RETURN @Err_Code; -- Single exit point

GO

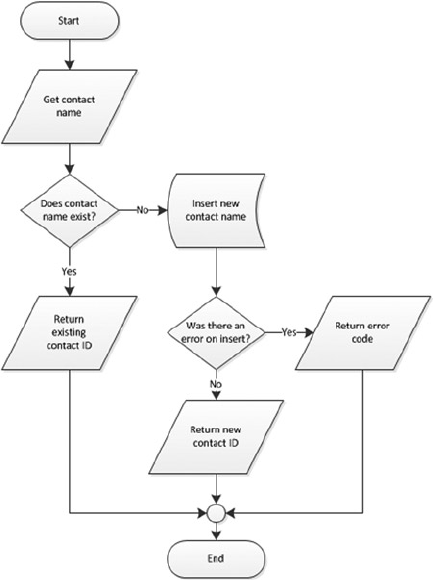

Figure 1-4 shows the modified flowchart for this new version of the SP.

Figure 1-4. Flowchart for an example with one entry and one exit

The one entry and one exit model makes the logic easier to follow, which in turn makes the code easier

to manage. This rule also applies to looping structures, which you implement via the WHILE statement

in T-SQL. Avoid using the WHILE loop’s CONTINUE and BREAK statements and the GOTO statement; these

statements lead to old-fashioned, difficult-to-maintain spaghetti code.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

17

Defensive Coding

Defensive coding involves anticipating problems before they occur and mitigating them through good coding

practices. The first and foremost lesson of defensive coding is to always check user input. Once you open your

system to users, expect them to do everything in their power to try to break your system. For instance, if you

ask users to enter a number between 1 and 10, expect that they’ll ignore your directions and key in ; DROP

TABLE dbo.syscomments; -- at the first available opportunity. Defensive coding practices dictate that you

should check and scrub external inputs. Don’t blindly trust anything that comes from an external source.

Another aspect of defensive coding is a clear delineation between exceptions and run-of-the-mill

issues. The key is that exceptions are, well, exceptional in nature. Ideally, exceptions should be caused by

errors that you can’t account for or couldn’t reasonably anticipate, like a lost network connection or physical

corruption of your application or data storage. Errors that can be reasonably expected, like data-entry errors,

should be captured before they’re raised to the level of exceptions. Keep in mind that exceptions are often

resource-intensive, expensive operations. If you can avoid an exception by anticipating a particular problem,

your application will benefit in both performance and control. SQL Server 2012 introduced a valuable

new error-handling feature called THROW. The TRY/CATCH/THROW statements are discussed in more detail in

Chapter 18.

The SELECT * Statement

Consider the SELECT * style of querying. In a SELECT clause, the asterisk (*) is a shorthand way of specifying

that all columns in a table should be returned. Although SELECT * is a handy tool for ad hoc querying of

tables during development and debugging, you normally shouldn’t use it in a production system. One

reason to avoid this method of querying is to minimize the amount of data retrieved with each call. SELECT *

retrieves all columns, regardless of whether they’re needed by the higher-level applications. For queries that

return a large number of rows, even one or two extraneous columns can waste a lot of resources.

If the underlying table or view is altered, columns may be added to or removed from the returned result

set. This can cause errors that are hard to locate and fix. By specifying the column names, your front-end

application can be assured that only the required columns are returned by a query and that errors caused by

missing columns will be easier to locate.

As with most things, there are always exceptions—for example, if you’re using the FOR XML AUTO clause

to generate XML based on the structure and content of your relational data. In this case, SELECT * can be

quite useful, because you’re relying on FOR XML to automatically generate the node names based on the

table and column names in the source tables.

Tip ■ SELECT * should be avoided, but if you do need to use it, always try to limit the data set being

returned. One way of doing so is to make full use of the T-SQL TOP command and restrict the number of records

returned. In practice, though, you should never write SELECT * in your code—even for small tables. Small

tables today could be large tables tomorrow.

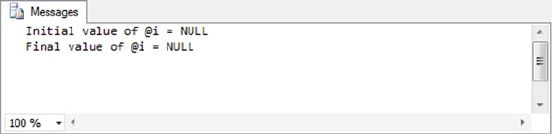

Variable Initialization

When you create SPs, UDFs, or any script that uses T-SQL user variables, you should initialize those variables

before the first use. Unlike some other programming languages that guarantee that newly declared variables

will be initialized to 0 or an empty string (depending on their data types), T-SQL guarantees only that newly

declared variables will be initialized to NULL. Consider the code snippet shown in Listing 1-9.

CHAPTER 1 ■ FOUNDATIONS OF T-SQL

18

Listing 1-9. Sample Code Using an Uninitialized Variable

DECLARE @i INT; SELECT @i = @i + 5; SELECT @i;

The result is NULL, which is a shock if you were expecting 5. Expecting SQL Server to initialize numeric

variables to 0 (like @i in the previous example) or an empty string will result in bugs that can be extremely

difficult to locate in your T-SQL code. To avoid these problems, always explicitly initialize your variables after

declaration, as demonstrated in Listing 1-10.

Listing 1-10. Sample Code Using an Initialized Variable

DECLARE @i INT = 0; -- Changed this statement to initialize @i to 0

SELECT @i = @i + 5;

SELECT @i;

Summary

This chapter has served as an introduction to T-SQL, including a brief history of SQL and a discussion of

the declarative programming style. The chapter started with a discussion of ISO SQL standard compatibility

in SQL Server 2014 and the differences between imperative and declarative languages, of which SQL is the

latter. You also saw many of the basic components of SQL, including databases, tables, views, SPs, and other

common database objects. Finally, I provided my personal recommendations for writing SQL code that is

easy to debug and maintain. I subscribe to the “eat your own dog food” theory, and throughout this book

I faithfully follow the best practice recommendations that I’ve asked you to consider.

The next chapter provides an overview of the new and improved tools available out of the box for

developers. Specifically, Chapter 2 discusses the SQLCMD text-based SQL client (originally a replacement

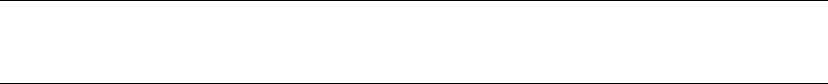

for osql), SSMS, SQL Server 2014 Books Online (BOL), and some of the other available tools that make

writing, editing, testing, and debugging easier and faster than ever.

eXerCISeS

1. Describe the difference between an imperative language and a declarative

language.

2. What does the acronym ACID stand for?

3. SQL Server 2014 supports seven different types of indexes. Two of these indexes

are newly introduced in SQL 2014. What are they?

4. Name two of the restrictions on any type of SQL Server UDF.

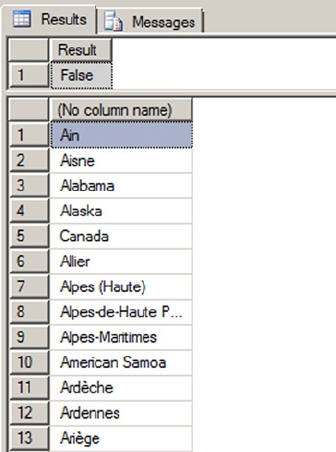

5. [True/False] In SQL Server, newly declared variables are always assigned the

default value 0 for numeric data types and an empty string for character data types.

19

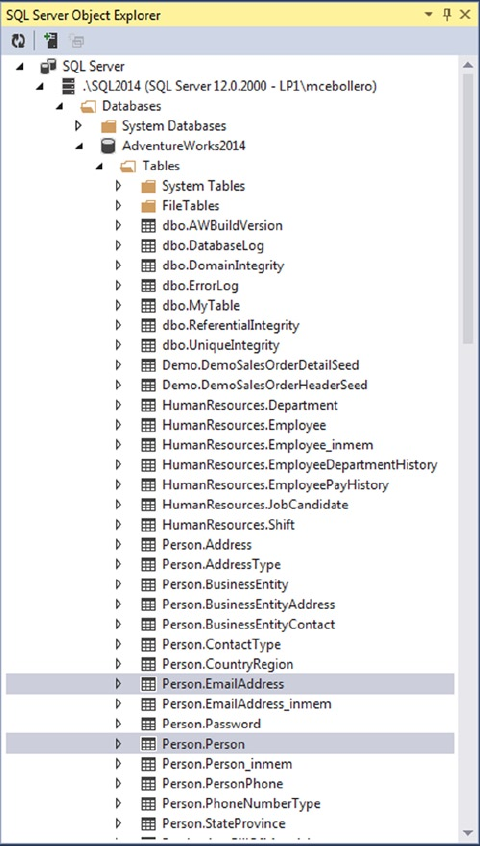

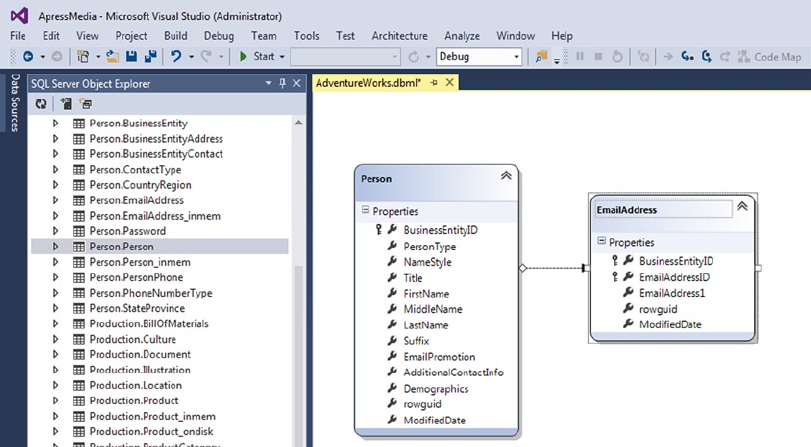

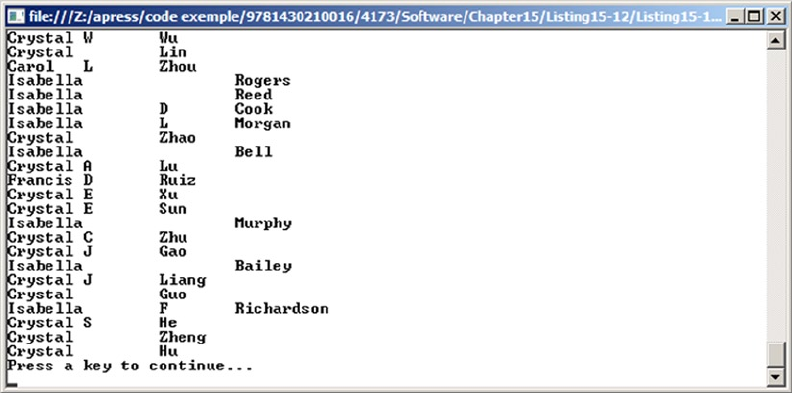

Chapter 2

Tools of the Trade

SQL Server 2014 comes with a wide selection of tools and utilities to make development easier and more

productive for developers. This chapter introduces some of the most important tools for SQL Server

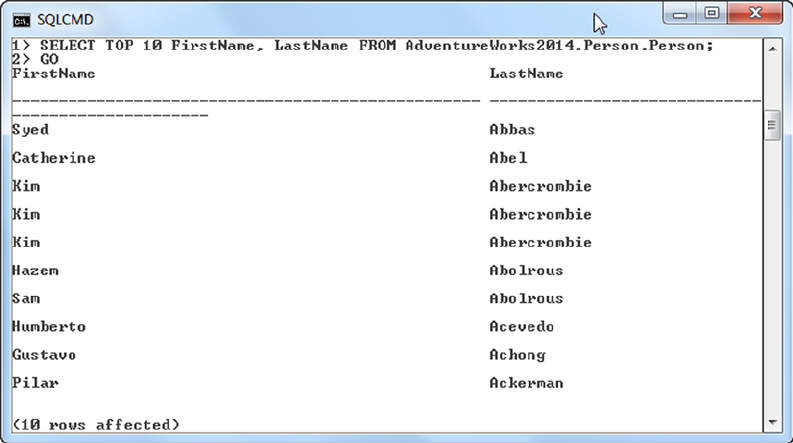

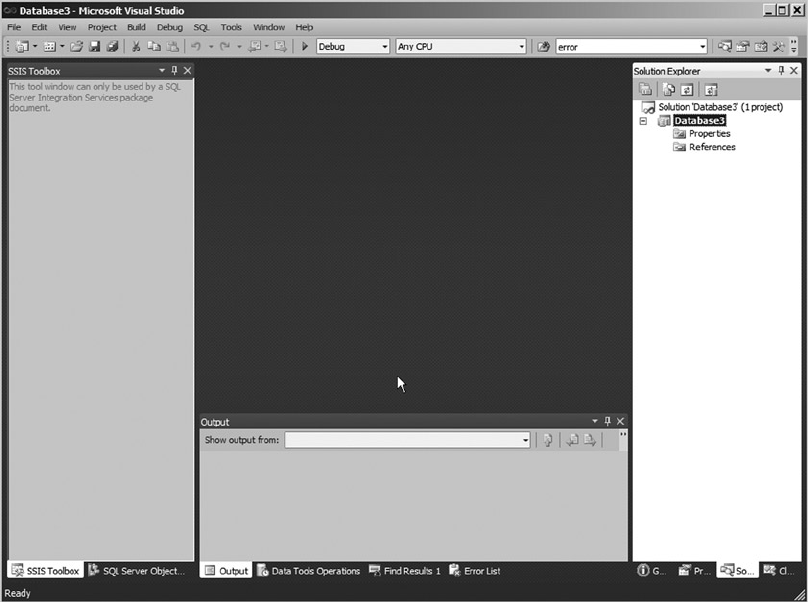

developers, including SQL Server Management Studio (SSMS) and the SQLCMD utility, SQL Server Data

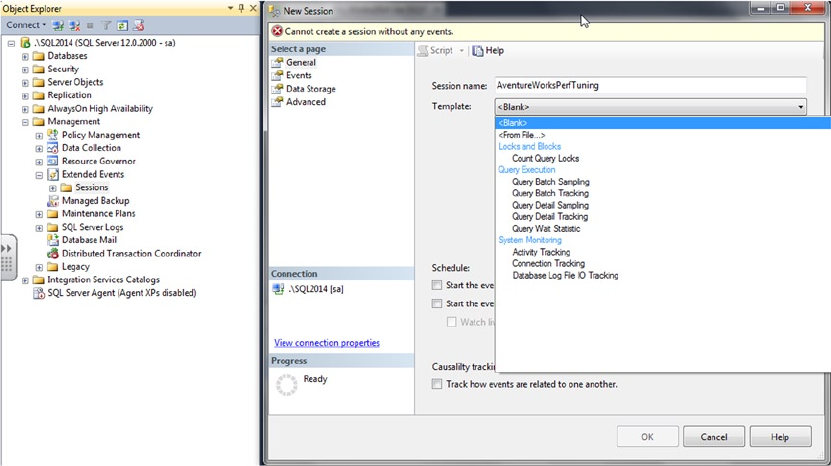

Tool add-ins to Microsoft Visual Studio, SQL Profiler, Database Tuning Advisor, Extended Events, and SQL

Server 2014 Books Online (BOL). You’re also introduced to supporting tools like SQL Server Integration

Services (SSIS), the Bulk Copy Program (BCP), and the AdventureWorks 2014 sample database, which you

use in examples throughout the book.

SQL Server Management Studio

Back in the heyday of SQL Server 2000, it was common for developers to fire up the Enterprise Manager (EM)

and Query Editor GUI database tools in rapid succession every time they sat down to write code. Historically,

developer and DBA roles in the DBMS have been highly separated, and with good reason. DBAs have historically

brought hardware and software administration and tuning skills, database design optimization experience, and

healthy doses of skepticism and security to the table. On the other hand, developers have focused on coding skills,

problem solving, system optimization, and debugging. This separation of powers works very well in production

systems, but in development environments developers are often responsible for their own database design and

management. Sometimes developers are put in charge of their own development server local security.

SQL Server 2000 EM was originally designed as a DBA tool, providing access to the graphical user

interface (GUI) administration interface, including security administration, database object creation and

management, and server management functionality. Query Editor was designed as a developer tool, the

primary GUI tool for creating, testing, and tuning queries.

SQL Server 2014 continues the tradition begun with SQL Server 2005 by combining the functionality

of both these GUI tools into a single GUI interface known as SQL Server Management Studio (SSMS).

This makes perfect sense in supporting real-world SQL Server development, where the roles of DBA and

developer are often intermingled in development environments.

Many SQL Server developers prefer the GUI administration and development tools to the text-based

query tool SQLCMD to build their databases, and on this front SSMS doesn’t disappoint. SSMS offers several

features that make development and administration easier, including the following:

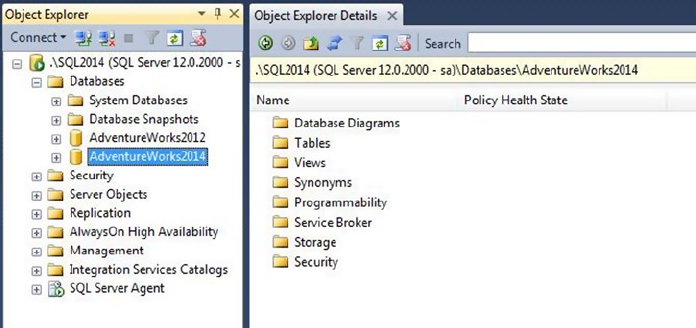

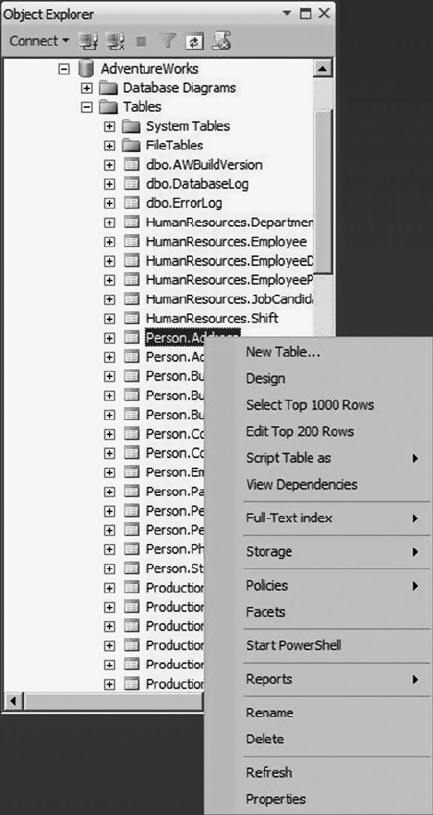

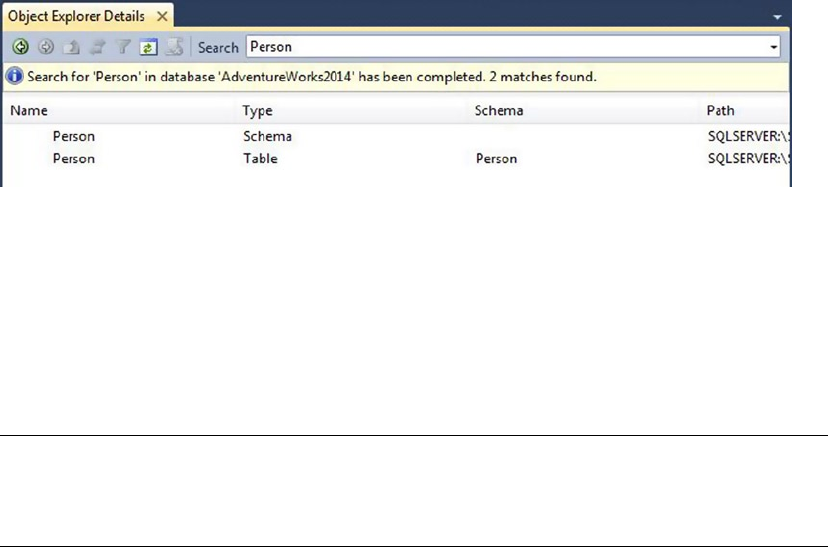

Integrated, functional Object Explorer, which provides the ability to easily view •

all the objects in the server and manage them in a tree structure. The added filter

functionality helps users narrow down the objects they want to work with.

Color coding of scripts, making editing and debugging easier.•

Enhanced keyboard shortcuts that make searching faster and easier. Additionally, •

users can map predefined keyboard shortcuts to stored procedures that are used

most often.

CHAPTER 2 ■ TOOLS OF THE TRADE

20

Two keyboard shortcut schemes: keyboard shortcuts from SQL Server 2008 R2 and •

Microsoft Visual Studio 2010 compatibility.

Usability enhancements such as the ability to zoom text in the Query Editor by •

holding the Ctrl key and scrolling to zoom in and out. Users can drag and drop tabs,

and there is true multimonitor support.

Breakpoint validation, which prevents users from setting breakpoints at invalid locations.•

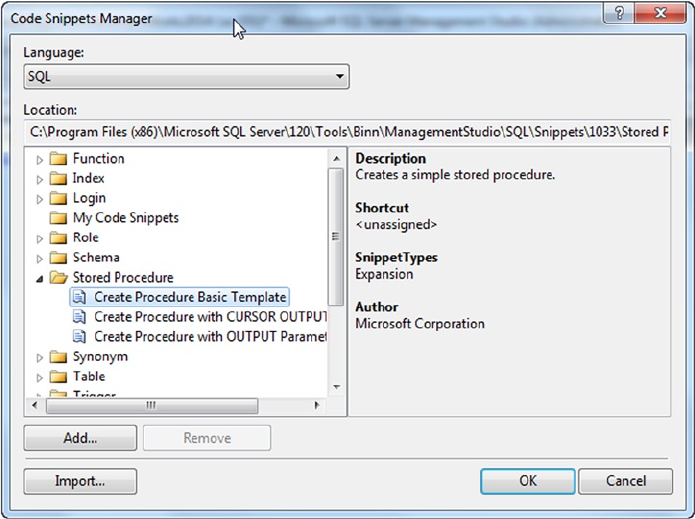

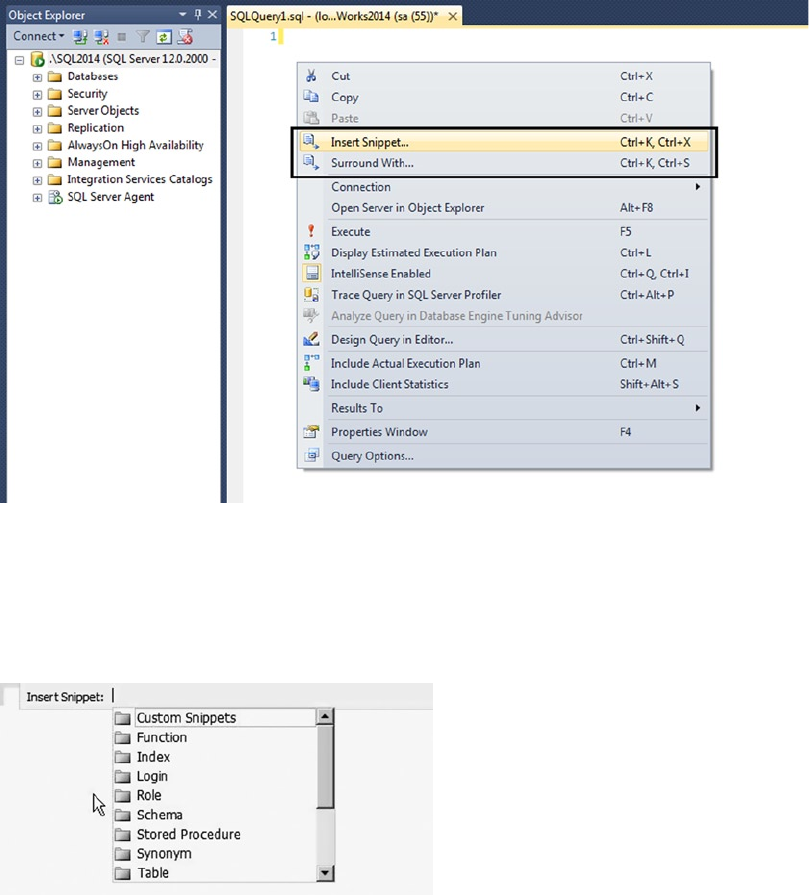

T-SQL code snippets, which are templates that can be used as starting points to build •

T-SQL statement in scripts and batches.

T-SQL Debugger Watch and Quick Watch windows, which support watching T-SQL •

expressions.

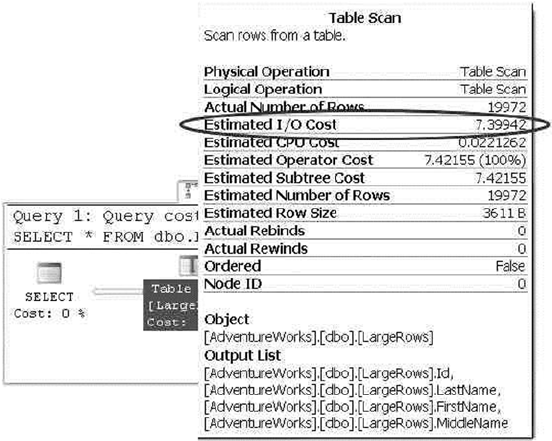

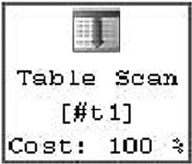

Graphical query execution plans. These are the bread and butter of the query-•

optimization process. They greatly simplify the process of optimizing complex

queries, quickly exposing potential bottlenecks in your code.

Project-management and code-version control integration, including integration •

with Team Foundation Server (TFS) and Visual SourceSafe version control systems.

SQLCMD mode, which allows you to execute SQL scripts using SQLCMD. You can •

take advantage of SQLCMD’s additional script capabilities, like scripting variables

and support for the AlwaysON feature.

SSMS also includes database and server management features, but this discussion is limited to some of

the most important developer-specific features.

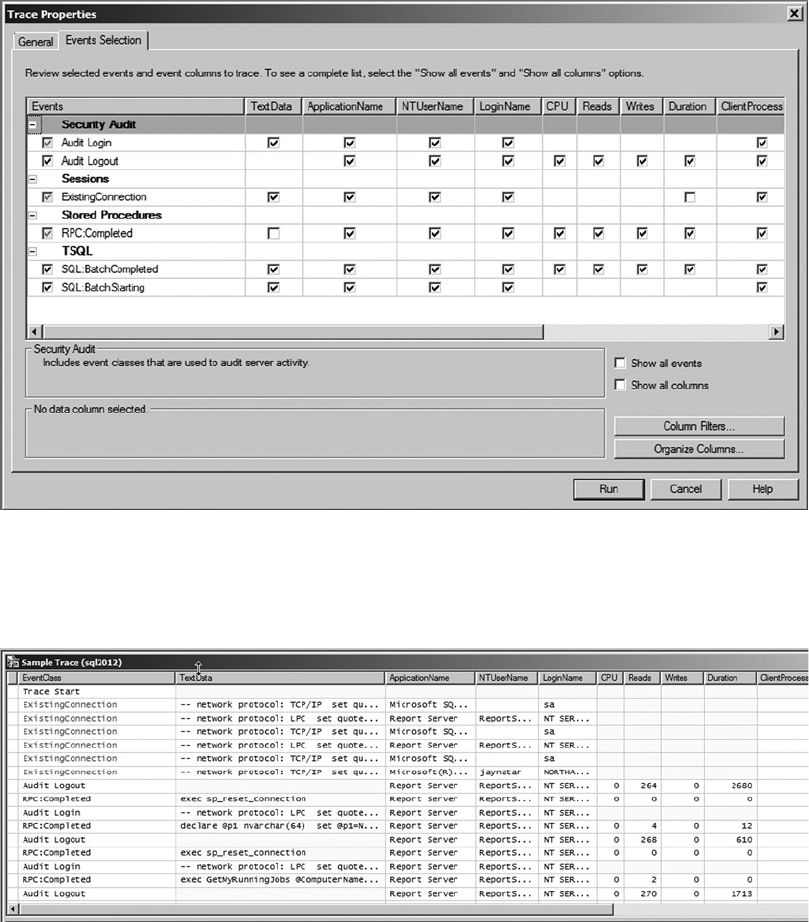

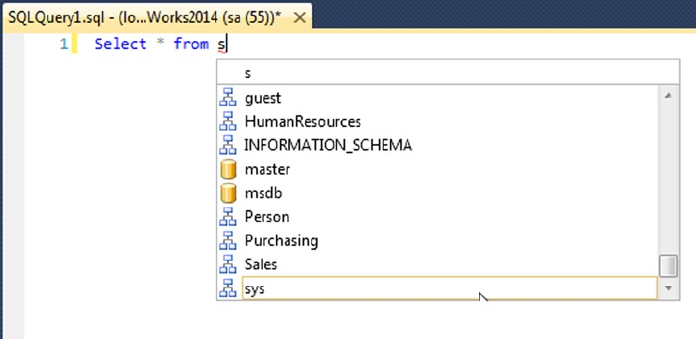

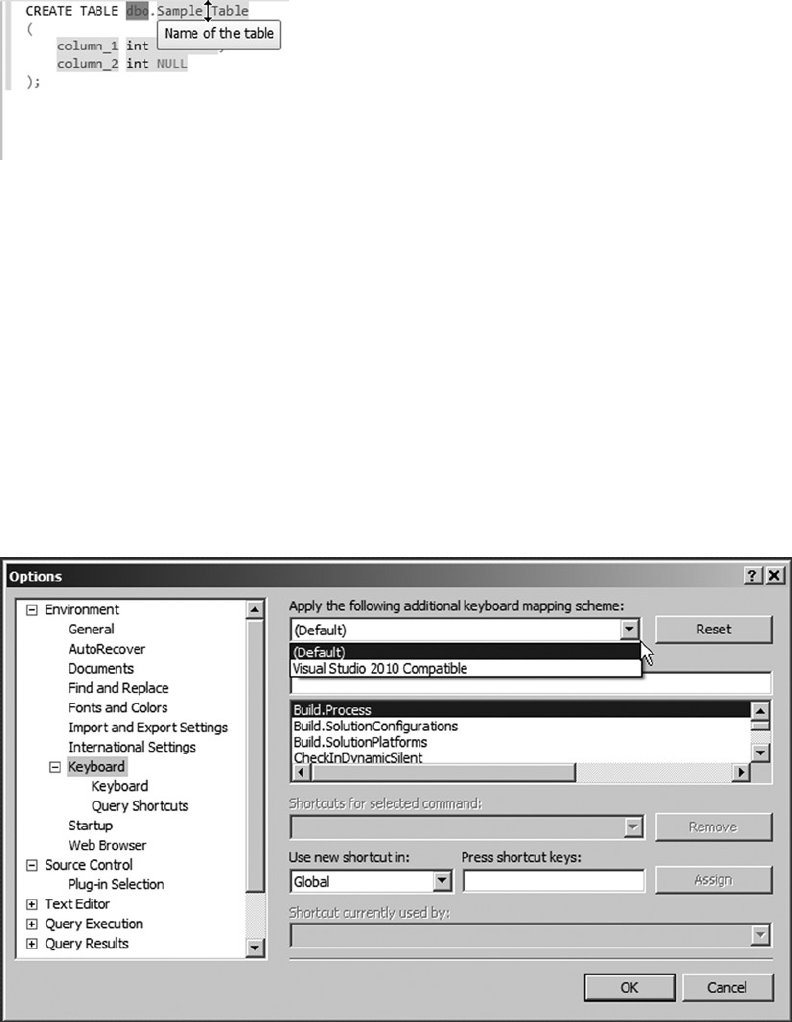

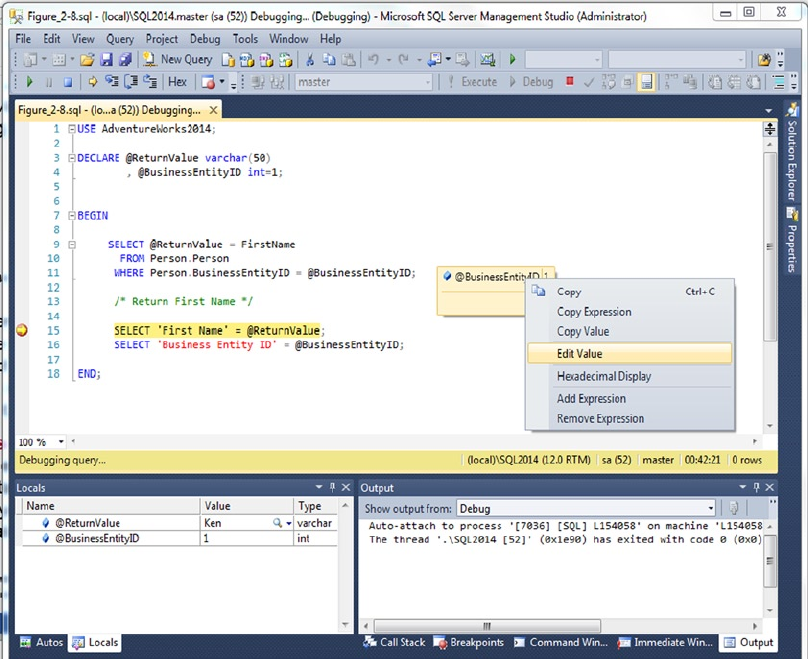

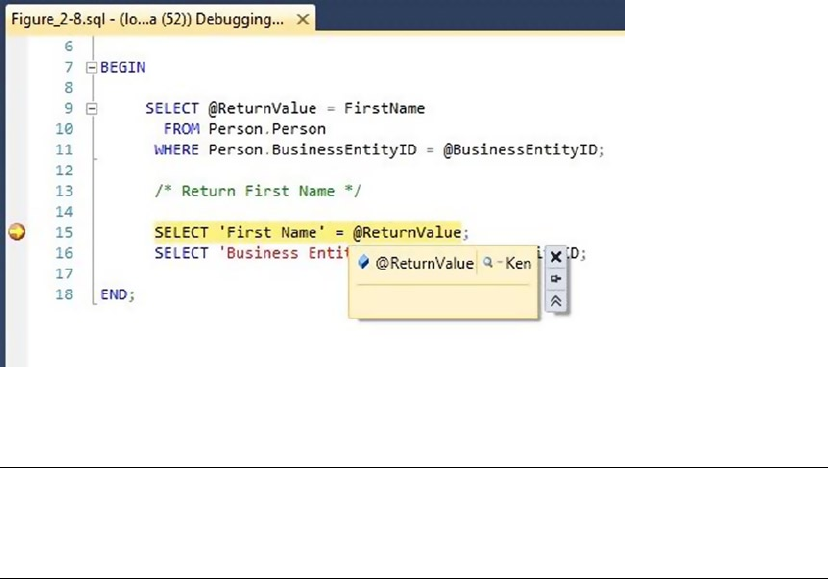

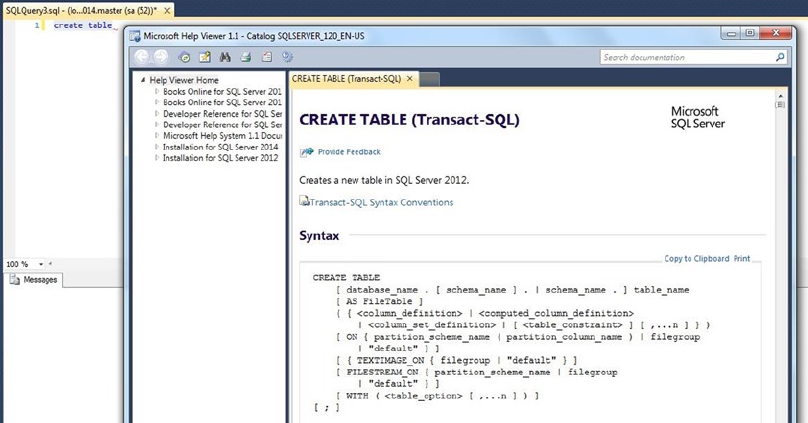

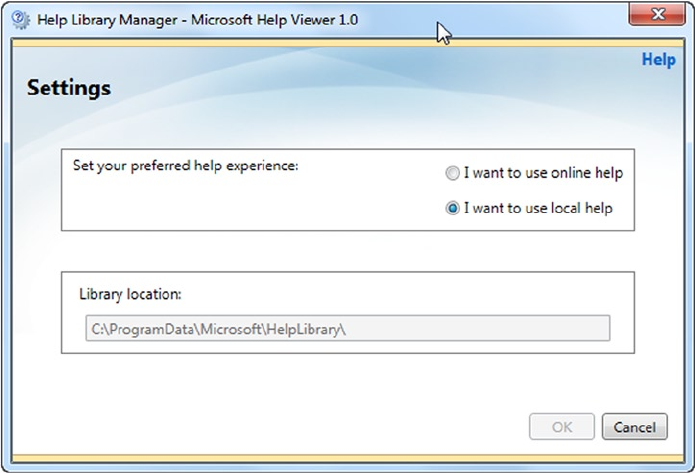

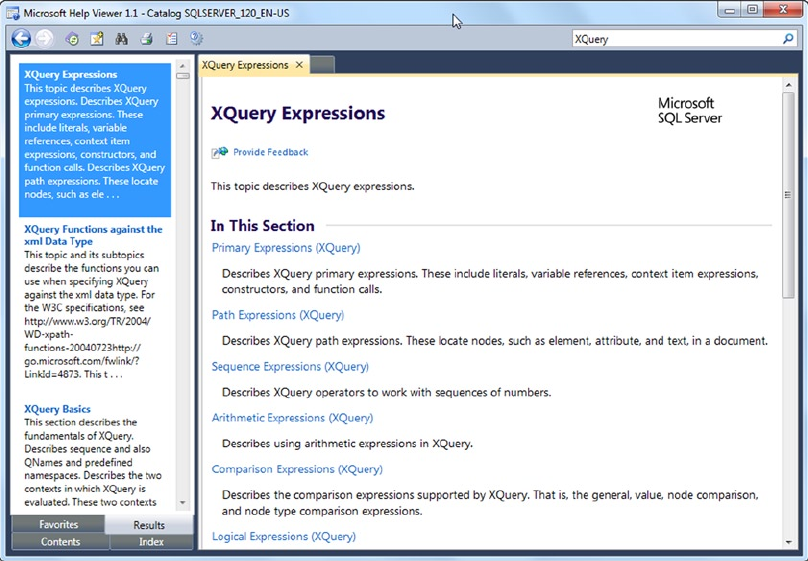

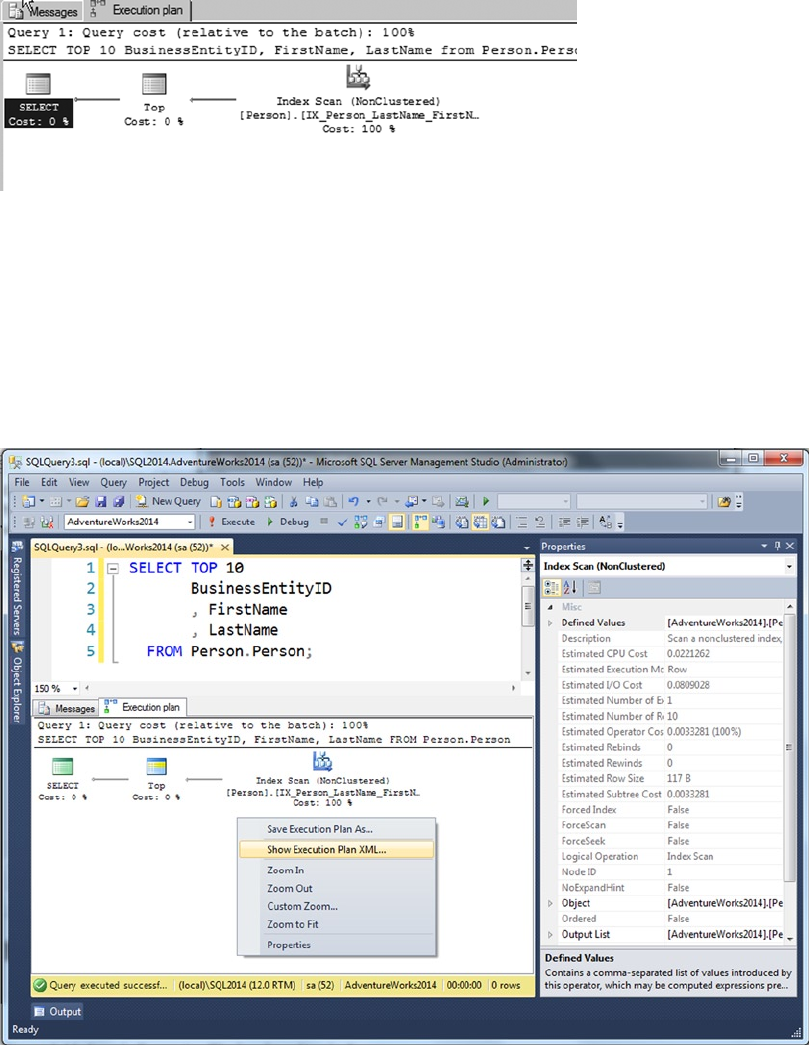

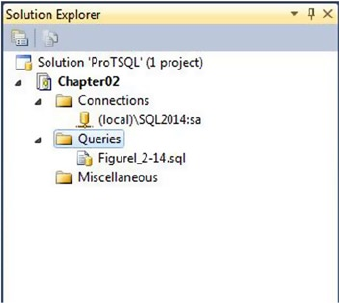

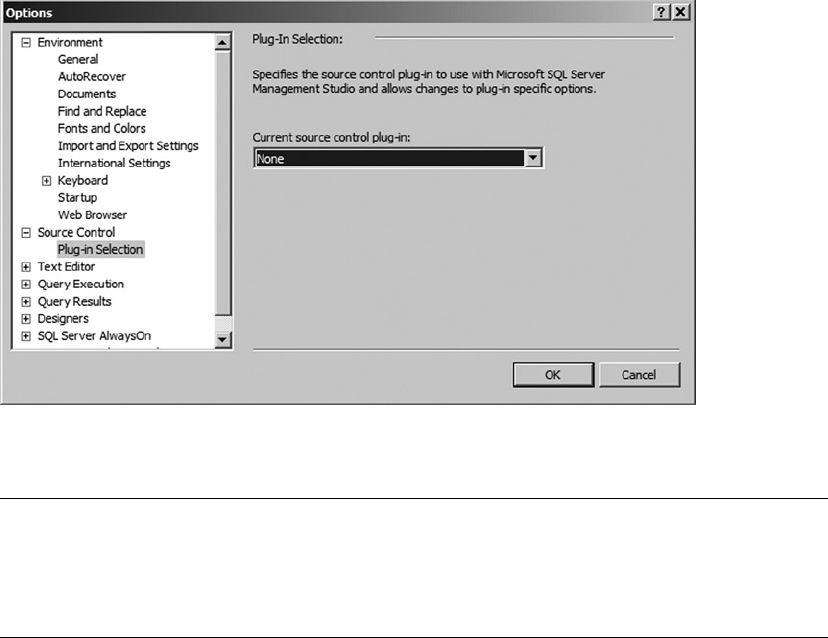

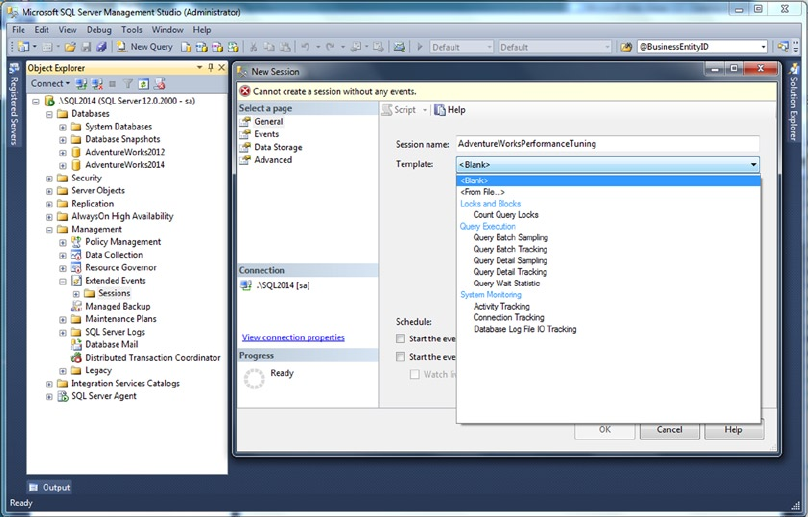

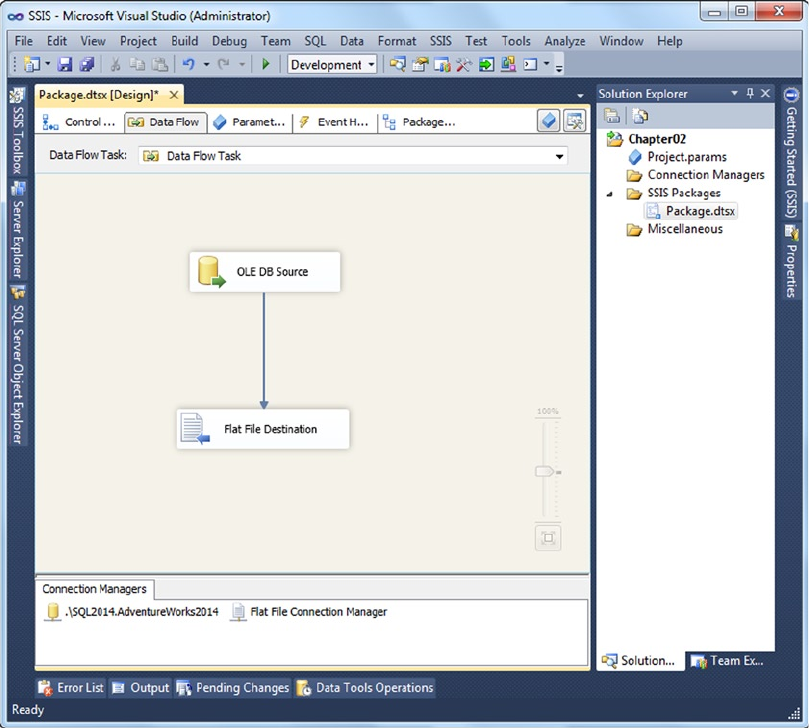

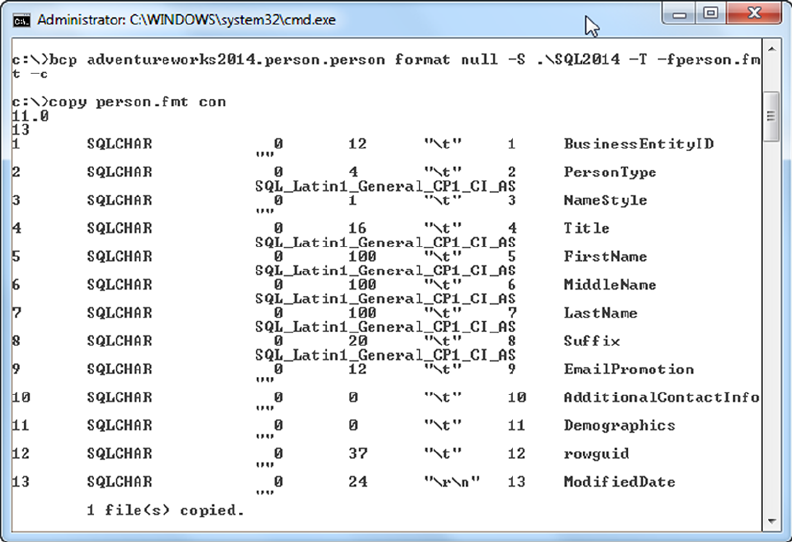

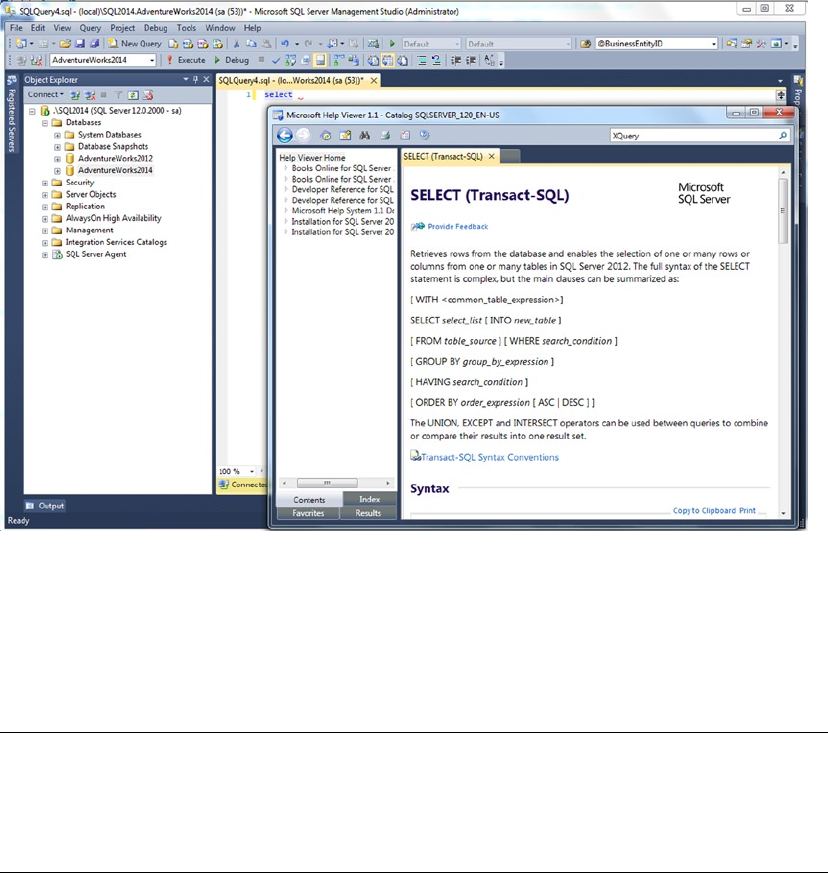

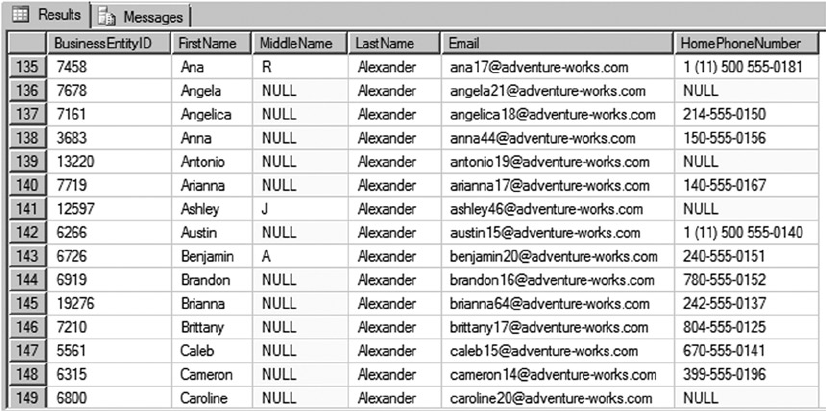

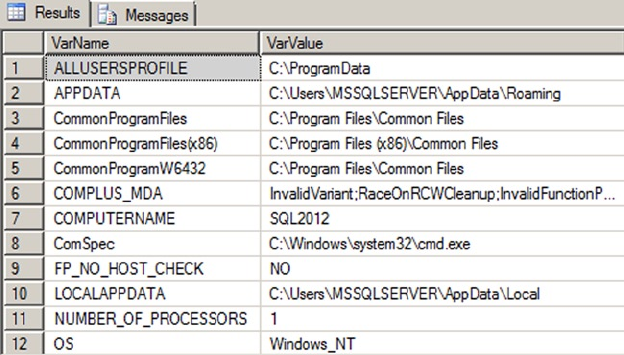

IntelliSense