PsychoPy Psychology Software For Python Psycho Py 1.78.00 Manual

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 243 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Overview

- Contributing to the project

- Credits

- Installation

- Dependencies

- Getting Started

- Builder

- Builder-to-coder

- Coder

- General issues

- Builder

- Coder

- Troubleshooting

- Recipes (``How-to''s)

- Adding external modules to Standalone PsychoPy

- Animation

- Scrolling text

- Fade-in / fade-out effects

- Building an application from your script

- Builder - providing feedback

- Builder - terminating a loop

- Installing PsychoPy in a classroom (administrators)

- Generating formatted strings

- Coder - interleave staircases

- Making isoluminant stimuli

- Adding a web-cam

- Frequently Asked Questions (FAQs)

- Resources (e.g. for teaching)

- Reference Manual (API)

- psychopy.core - basic functions (clocks etc.)

- psychopy.visual - many visual stimuli

- psychopy.data - functions for storing/saving/analysing data

- psychopy.contrib.opensslwrap Encryption (beta)

- psychopy.event - for keypresses and mouse clicks

- psychopy.filters - helper functions for creating filters

- psychopy.gui - create dialogue boxes

- psychopy.hardware - hardware interfaces

- psychopy.info - functions for getting information about the system

- psychopy.logging - control what gets logged

- psychopy.microphone - Capture and analyze sound

- psychopy.misc - miscellaneous routines for converting units etc

- psychopy.monitors - for those that don't like Monitor Center

- psychopy.parallel - functions for interacting with the parallel port

- psychopy.serial - functions for interacting with the serial port

- psychopy.sound - play various forms of sound

- psychopy.web - Web methods

- Indices and tables

- For Developers

- PsychoPy Experiment file format (.psyexp)

- Glossary

- Indices

- Python Module Index

- Index

PsychoPy - Psychology software for

Python

Release 1.78.00

Jonathan Peirce

August 02, 2013

CONTENTS

1 Overview 3

1.1 Features .................................................. 3

1.2 Hardware Integration ........................................... 4

1.3 System requirements ........................................... 4

2 Contributing to the project 5

2.1 Why make it free? ............................................ 5

2.2 How do I contribute changes? ...................................... 5

2.3 Contribute to the Forum (mailing list) .................................. 5

3 Credits 7

3.1 Developers ................................................ 7

3.2 Support .................................................. 7

3.3 Funding .................................................. 7

4 Installation 9

4.1 Overview ................................................. 9

4.2 Recommended hardware ......................................... 9

4.3 Windows ................................................. 9

4.4 Mac OS X ................................................ 10

4.5 Linux ................................................... 10

5 Dependencies 11

5.1 Essential packages ............................................ 11

5.2 Suggested packages ........................................... 12

6 Getting Started 13

7 Builder 15

7.1 A first program .............................................. 15

7.2 Getting beyond Hello .......................................... 17

8 Builder-to-coder 19

9 Coder 21

10 General issues 23

10.1 Monitor Center .............................................. 23

10.2 Units for the window and stimuli .................................... 24

10.3 Color spaces ............................................... 25

i

10.4 Preferences ................................................ 28

10.5 Data outputs ............................................... 29

10.6 Gamma correcting a monitor ....................................... 31

10.7 Timing Issues and synchronisation .................................... 33

11 Builder 39

11.1 Builder concepts ............................................. 40

11.2 Routines ................................................. 41

11.3 Flow ................................................... 41

11.4 Components ............................................... 44

11.5 Experiment settings ........................................... 56

11.6 Defining the onset/duration of components ............................... 57

11.7 Generating outputs (datafiles) ...................................... 58

11.8 Common Mistakes (aka Gotcha’s) .................................... 58

11.9 Compiling a Script ............................................ 59

11.10 Set up your monitor properly ...................................... 60

11.11 Future developments ........................................... 60

12 Coder 61

12.1 Basic Concepts .............................................. 61

12.2 PsychoPy Tutorials ............................................ 65

13 Troubleshooting 73

13.1 The application doesn’t start ....................................... 73

13.2 I run a Builder experiment and nothing happens ............................. 74

13.3 Manually turn off the viewing of output ................................. 74

13.4 Use the source (Luke?) .......................................... 74

13.5 Cleaning preferences and app data .................................... 74

14 Recipes (“How-to”s) 77

14.1 Adding external modules to Standalone PsychoPy ........................... 77

14.2 Animation ................................................ 78

14.3 Scrolling text ............................................... 78

14.4 Fade-in / fade-out effects ......................................... 78

14.5 Building an application from your script ................................. 79

14.6 Builder - providing feedback ....................................... 80

14.7 Builder - terminating a loop ....................................... 81

14.8 Installing PsychoPy in a classroom (administrators) ........................... 82

14.9 Generating formatted strings ....................................... 84

14.10 Coder - interleave staircases ....................................... 84

14.11 Making isoluminant stimuli ....................................... 85

14.12 Adding a web-cam ............................................ 86

15 Frequently Asked Questions (FAQs) 89

15.1 Why is the bits++ demo not working? .................................. 89

15.2 Can PsychoPy run my experiment with sub-millisecond timing? .................... 89

16 Resources (e.g. for teaching) 91

16.1 P4N .................................................... 91

16.2 Youtube tutorials ............................................. 91

16.3 Lecture materials (Builder) ........................................ 91

16.4 Lecture materials (Coder) ........................................ 91

16.5 Previous events .............................................. 92

17 Reference Manual (API) 93

ii

17.1 psychopy.core - basic functions (clocks etc.) ............................ 93

17.2 psychopy.visual - many visual stimuli .............................. 95

17.3 psychopy.data - functions for storing/saving/analysing data .................... 146

17.4 psychopy.contrib.opensslwrap Encryption (beta) ...................... 162

17.5 psychopy.event - for keypresses and mouse clicks ......................... 167

17.6 psychopy.filters - helper functions for creating filters ...................... 169

17.7 psychopy.gui - create dialogue boxes ................................ 172

17.8 psychopy.hardware - hardware interfaces ............................. 173

17.9 psychopy.info - functions for getting information about the system ................ 186

17.10 psychopy.logging - control what gets logged ........................... 187

17.11 psychopy.microphone - Capture and analyze sound ....................... 189

17.12 psychopy.misc - miscellaneous routines for converting units etc .................. 194

17.13 psychopy.monitors - for those that don’t like Monitor Center .................. 197

17.14 psychopy.parallel - functions for interacting with the parallel port ............... 202

17.15 psychopy.serial - functions for interacting with the serial port .................. 204

17.16 psychopy.sound - play various forms of sound ........................... 204

17.17 psychopy.web - Web methods .................................... 205

17.18 Indices and tables ............................................ 207

18 For Developers 209

18.1 Using the repository ........................................... 209

18.2 Adding documentation .......................................... 212

18.3 Adding a new Builder Component .................................... 213

18.4 Style-guide for coder demos ....................................... 215

18.5 Adding a new Menu Item ........................................ 217

19 PsychoPy Experiment file format (.psyexp) 219

19.1 Parameters ................................................ 219

19.2 Settings .................................................. 219

19.3 Routines ................................................. 220

19.4 Components ............................................... 220

19.5 Flow ................................................... 220

19.6 Names .................................................. 220

20 Glossary 223

21 Indices 225

Python Module Index 227

Index 229

iii

iv

PsychoPy - Psychology software for Python, Release 1.78.00

Contents:

CONTENTS 1

PsychoPy - Psychology software for Python, Release 1.78.00

2 CONTENTS

CHAPTER

ONE

OVERVIEW

PsychoPy is an open-source package for running experiments in Python (a real and free alternative to Matlab). Psy-

choPy combines the graphical strengths of OpenGL with the easy Python syntax to give scientists a free and simple

stimulus presentation and control package. It is used by many labs worldwide for psychophysics, cognitive neuro-

science and experimental psychology.

Because it’s open source, you can download it and modify the package if you don’t like it. And if you make changes

that others might use then please consider giving them back to the community via the mailing list. PsychoPy has been

written and provided to you absolutely for free. For it to get better it needs as much input from everyone as possible.

1.1 Features

There are many advantages to using PsychoPy, but here are some of the key ones

• Simple install process

•Huge variety of stimuli (see screenshots) generated in real-time:

–linear gratings, bitmaps constantly updating

–radial gratings

–random dots

–movies (DivX, mov, mpg...)

–text (unicode in any truetype font)

–shapes

–sounds (tones, numpy arrays, wav, ogg...)

• Platform independent - run the same script on Win, OS X or Linux

• Flexible stimulus units (degrees, cm, or pixels)

•Coder interface for those that like to program

•Builder interface for those that don’t

• Input from keyboard, mouse, microphone or button boxes

• Multi-monitor support

• Automated monitor calibration (for supported photometers)

3

PsychoPy - Psychology software for Python, Release 1.78.00

1.2 Hardware Integration

PsychoPy supports communication via serial ports, parallel ports and compiled drivers (dlls and dylibs), so it can talk to any hardware that your computer can! Interfaces are prebuilt for;

• Spectrascan PR650, PR655, PR670

• Minolta LS110, LS100

• Cambridge Research Systems Bits++

• Cedrus response boxes (RB7xx series)

1.3 System requirements

Although PsychoPy runs on a wide variety of hardware, and on Windows, OS X or Linux, it really does benefit from

a decent graphics card. Get an ATI or nVidia card that supports OpenGL 2.0. Avoid built-in Intel graphics chips (e.g.

GMA 950)

4 Chapter 1. Overview

CHAPTER

TWO

CONTRIBUTING TO THE PROJECT

PsychoPy is an open-source, community-driven project. It is written and provided free out of goodwill by people that

make no money from it and have other jobs to do. The way that open-source projects work is that users contribute

back some of their time.

2.1 Why make it free?

It has taken, literally, thousands of hours of programming to get PsychoPy where it is today and it is provided absolutely

for free. Without somone working on it full time (which would mean charging you for it) the only way for the software

to keep getting better is if people contribute back to the project.

Please, please, please make the effort to give a little back to this project. If you found the documentation hard to

understand then think about how you would have preferred it to be written and contribute it.

2.2 How do I contribute changes?

For simple changes, and for users that aren’t so confident with things like version control systems then just send your

changes to the mailing list.

If you want to make more substantial changes then its often good to discuss them first on the developers mailing list.

The ideal model, is to contribute via the repository on github. There is more information on that in the For Developers

section of the documentation.

2.3 Contribute to the Forum (mailing list)

The easiest way to help the project is to write to the forum (mailing list) with suggestions and solutions.

For documentation suggestions please try to provide actual replacement text. You, as a user, are probably better placed

to write this than the actual developers (they know too much to write good docs)!

If you’re having problems, e.g. you think you may have found a bug:

• take a look at the Troubleshooting and Common Mistakes (aka Gotcha’s) first

• submit a message with as much information as possible about your system and the problem

• please try to be precise. Rather than say “It didn’t work” try to say what what specific form of “not

working” you found (did the stimulus not appear? or it appeared but poorly rendered? or the whole

application crashed?!)

5

PsychoPy - Psychology software for Python, Release 1.78.00

• if there is an error message, try to provide it completely

If you had problems and worked out how to fix things, even if it turned out the problem was your own lack of under-

standing, please still contribute the information. Others are likely to have similar problems. Maybe the documentation

could be clearer, or your email to the forum will be found by others googling for the same problem.

To make your message more useful you should, please try to:

• provide info about your system and PsychoPy version(e.g. the output of the sysInfo demo in coder). A lot

of problems are specific to a particular graphics card or platform

• provide a minimal example of the breaking code (if you’re writing scripts)

6 Chapter 2. Contributing to the project

CHAPTER

THREE

CREDITS

3.1 Developers

PsychoPy was initially created and maintained by Jon Peirce but has many contributors to the code:

Jeremy Gray, Yaroslav Halchenko, Erik Kastman, Mike MacAskill, William Hogman, Jonas Lindeløv,

Ariel Rokem, Dave Britton, Gary Strangman, C Luhmann, Sol Simpson, Hiroyuki Sogo

You can see details of contributions on Ohloh.net and there’s a visualisation of PsychoPy’s development history on

youtube.

PsychoPy also stands on top of a large number of other developers’ work. It wouldn’t be possible to write this package

without the preceding work of those that wrote the Dependencies

3.2 Support

Software projects aren’t just about code. A great deal of work is done by the community in terms of support-

ing each other. Jeremy Gray, Mike MacAskill, Jared Roberts and Jonas Lindelov particularly stand out in doing

a fantastic job of answering other users’ questions. You can see the most active posters on the users list here:

https://groups.google.com/forum/#!aboutgroup/psychopy-users

3.3 Funding

The PsychoPy project has attracted small grants from the HEA Psychology Network and Cambridge Research Systems

. Thanks to those organisations for their support.

Jon is paid by The University of Nottingham (which allows him to spend time on this) and his grants from the BBSRC

and Wellcome Trust have also helped the development PsychoPy.

7

PsychoPy - Psychology software for Python, Release 1.78.00

8 Chapter 3. Credits

CHAPTER

FOUR

INSTALLATION

4.1 Overview

PsychoPy can be installed in three main ways:

•As an application: The “Stand Alone” versions include everything you need to create and run experiments.

When in doubt, choose this option.

•As libraries: PsychoPy and the libraries it depends on can also be installed individually, providing greater

flexibility. This option requires managing a python environment.

•As source code: If you want to customize how PsychoPy works, consult the developer’s guide for installation

and work-flow suggestions.

When you start PsychoPy for the first time, a Configuration Wizard will retrieve and summarize key system settings.

Based on the summary, you may want to adjust some preferences to better reflect your environment. In addition, this

is a good time to unpack the Builder demos to a location of your choice. (See the Demo menu in the Builder.)

If you get stuck or have questions, please email the mailing list.

If all goes well, at this point your installation will be complete! See the next section of the manual, Getting started.

4.2 Recommended hardware

The minimum requirement for PsychoPy is a computer with a graphics card that supports OpenGL. Many newer

graphics cards will work well. Ideally the graphics card should support OpenGL version 2.0 or higher. Certain visual

functions run much faster if OpenGL 2.0 is available, and some require it (e.g. ElementArrayStim).

If you already have a computer, you can install PsychoPy and the Configuration Wizard will auto-detect the card and

drivers, and provide more information. It is inexpensive to upgrade most desktop computers to an adequate graphics

card. High-end graphics cards can be very expensive but are only needed for vision research (and high-end gaming).

If you’re thinking of buying a laptop for running experiments, avoid the built-in Intel graphics chips (e.g. GMA

950). The drivers are crummy and performance is poor; graphics cards on laptops are more difficult to exchange. Get

something with nVidia or ATI chips instead. Some graphics cards that are known to work with PsychoPy can be found

here; that list is not exhaustive, many cards will also work.

4.3 Windows

Once installed, you’ll now find a link to the PsychoPy application in > Start > Progams > PsychoPy2. Click that and

the Configuration Wizard should start.

9

PsychoPy - Psychology software for Python, Release 1.78.00

The wizard will try to make sure you have reasonably current drivers for your graphics card. You may be directed

to download the latest drivers from the vendor, rather than using the pre-installed windows drivers. If necessary, get

new drivers directly from the graphics card vendor; don’t rely on Windows updates. The windows-supplied drivers are

buggy and sometimes don’t support OpenGL at all.

The StandAlone installer adds the PsychoPy folder to your path, so you can run the included version of python from

the command line. If you have your own version of python installed as well then you need to check which one is run

by default, and change your path according to your personal preferences.

4.4 Mac OS X

There are different ways to install PsychoPy on a Mac that will suit different users. Almost all Mac’s come with a

suitable video card by default.

• Intel Mac users (with OS X v10.7 or higher; 10.5 and 10.6 might still work) can simply download the standalone

application bundle (the dmg file) and drag it to their Applications folder. (Installing it elsewhere should work

fine too.)

• Users of macports can install PsychoPy and all its dependencies simply with:

sudo port install py25-psychopy

(Thanks to James Kyles.)

• For PPC Macs (or for Intel Mac users that want their own custom python for running PsychoPy) you need to

install the dependencies and PsychoPy manually. The easiest way is to use the Enthought Python Distribution

(see Dependencies, below).

• You could alternatively manually install the ‘framework build’ of python and the dependencies (see below). One

advantage to this is that you can then upgrade versions with:

sudo easy_install -N -Z -U psychopy

4.5 Linux

Debian systems: PsychoPy is in the Debian packages index so you can simply do:

sudo apt-get install psychopy

Ubuntu (and other Debian-based distributions):

1. Add the following sources in Synaptic, in the Configuration > Repository dialog box, under “Other software”:

deb http://neuro.debian.net/debian karmic main contrib non-free

deb-src http://neuro.debian.net/debian karmic main contrib non-free

2. Then follow the ‘Package authentification’ procedure described in http://neuro.debian.net/

3. Then install the psychopy package under Synaptic or through sudo apt-get install psychopy which will install

all dependencies.

(Thanks to Yaroslav Halchenko for the Debian and NeuroDebian package.)

non-Debian systems: You need to install the dependencies below. Then install PsychoPy:

$ sudo easy_install psychopy

...

Downloading http://psychopy.googlecode.com/files/PsychoPy-1.75.01-py2.7.egg

10 Chapter 4. Installation

CHAPTER

FIVE

DEPENDENCIES

Like many open-source programs, PsychoPy depends on the work of many other people in the form of libraries.

5.1 Essential packages

Python: If you need to install python, or just want to, the easiest way is to use the Enthought Python Distribution,

which is free for academic use. Be sure to get a 32-bit version. The only things it misses are avbin,pyo, and flac.

If you want to install each library individually rather than use the simpler distributions of packages above then you can

download the following. Make sure you get the correct version for your OS and your version of Python. easy_install

will work for many of these, but some require compiling from source.

•python (32-bit only, version 2.6 or 2.7; 2.5 might work, 3.x will not)

•avbin (movies) On mac: 1) Download version 5 from google (not a higher version). 2) Start terminal, type sudo

mkdir -p /usr/local/lib . 3) cd to the unpacked avbin directory, type sh install.sh . 4) Start or restart PsychoPy,

and from PsychoPy’s coder view shell, this should work: from pyglet.media import avbin . If you run a script

and get an error saying ‘NoneType’ object has no attribute ‘blit’, it probably means you did not install version

5.

•setuptools

•numpy (version 0.9.6 or greater)

•scipy (version 0.4.8 or greater)

•pyglet (version 1.1.4, not version 1.2)

•wxPython (version 2.8.10 ro 2.8.11, not 2.9)

•Python Imaging Library (sudo easy_install PIL)

•matplotlib (for plotting and fast polygon routines)

•lxml (needed for loading/saving builder experiment files)

•openpyxl (for loading params from xlsx files)

•pyo (sound, version 0.6.2 or higher, compile with —-no-messages)

These packages are only needed for Windows:

•pywin32

•winioport (to use the parallel port)

•inpout32 (an alternative method to using the parallel port on Windows)

11

PsychoPy - Psychology software for Python, Release 1.78.00

•inpoutx64 (to use the parallel port on 64-bit Windows)

These packages are only needed for Linux:

•pyparallel (to use the parallel port)

5.2 Suggested packages

In addition to the required packages above, additional packages can be useful to PsychoPy users, e.g. for controlling

hardware and performing specific tasks. These are packaged with the Standalone versions of PsychoPy but users with

their own custom Python environment need to install these manually. Most of these can be installed with easy_install.

General packages:

• psignifit for bootstrapping and other resampling tests

• pyserial for interfacing with the serial port

• parallel python (aka pp) for parallel processing

•flac audio codec, for working with google-speech

Specific hardware interfaces:

•pynetstation to communicate with EGI netstation. See notes on using egi (pynetstation)

• ioLabs toolbox

• labjack toolbox

For developers:

•pytest and coverage for running unit tests

•sphinx for building documentation

12 Chapter 5. Dependencies

CHAPTER

SIX

GETTING STARTED

As an application, PsychoPy has two main views: the Builder view, and the Coder view. It also has a underlying API

that you can call directly.

1. Builder. You can generate a wide range of experiments easily from the Builder using its intuitive, graphical

user interface (GUI). This might be all you ever need to do. But you can always compile your experiment

into a python script for fine-tuning, and this is a quick way for experienced programmers to explore some of

PsychoPy’s libraries and conventions.

2. Coder. For those comfortable with programming, the Coder view provides a basic code editor with syntax

highlighting, code folding, and so on. Importantly, it has its own output window and Demo menu. The demos

illustrate how to do specific tasks or use specific features; they are not whole experiments. The Coder tutorials

should help get you going, and the API reference will give you the details.

The Builder and Coder views are the two main aspects of the PsychoPy application. If you’ve installed the StandAlone

version of PsychoPy on MS Windows then there should be an obvious link to PsychoPy in your > Start > Programs. If

you installed the StandAlone version on Mac OS X then the application is where you put it (!). On these two platforms

you can open the Builder and Coder views from the View menu and the default view can be set from the preferences.

On linux, you can start PsychoPy from a command line, or make a launch icon (which can depend on the desktop and

distro). If the PsychoPy app is started with flags —-coder (or -c), or —-builder (or -b), then the preferences will be

overridden and that view will be created as the app opens.

For experienced python programmers, its possible to use PsychoPy without ever opening the Builder or Coder. Install

the PsychoPy libraries and dependencies, and use your favorite IDE instead of the Coder.

13

PsychoPy - Psychology software for Python, Release 1.78.00

14 Chapter 6. Getting Started

CHAPTER

SEVEN

BUILDER

When learning a new computer language, the classic first program is simply to print or display “Hello world!”. Lets

do it.

7.1 A first program

Start PsychoPy, and be sure to be in the Builder view.

• If you have poked around a bit in the Builder already, be sure to start with a clean slate. To get a new Builder

view, type Ctrl-N on Windows or Linux, or Cmd-N on Mac.

•Click on a Text component

15

PsychoPy - Psychology software for Python, Release 1.78.00

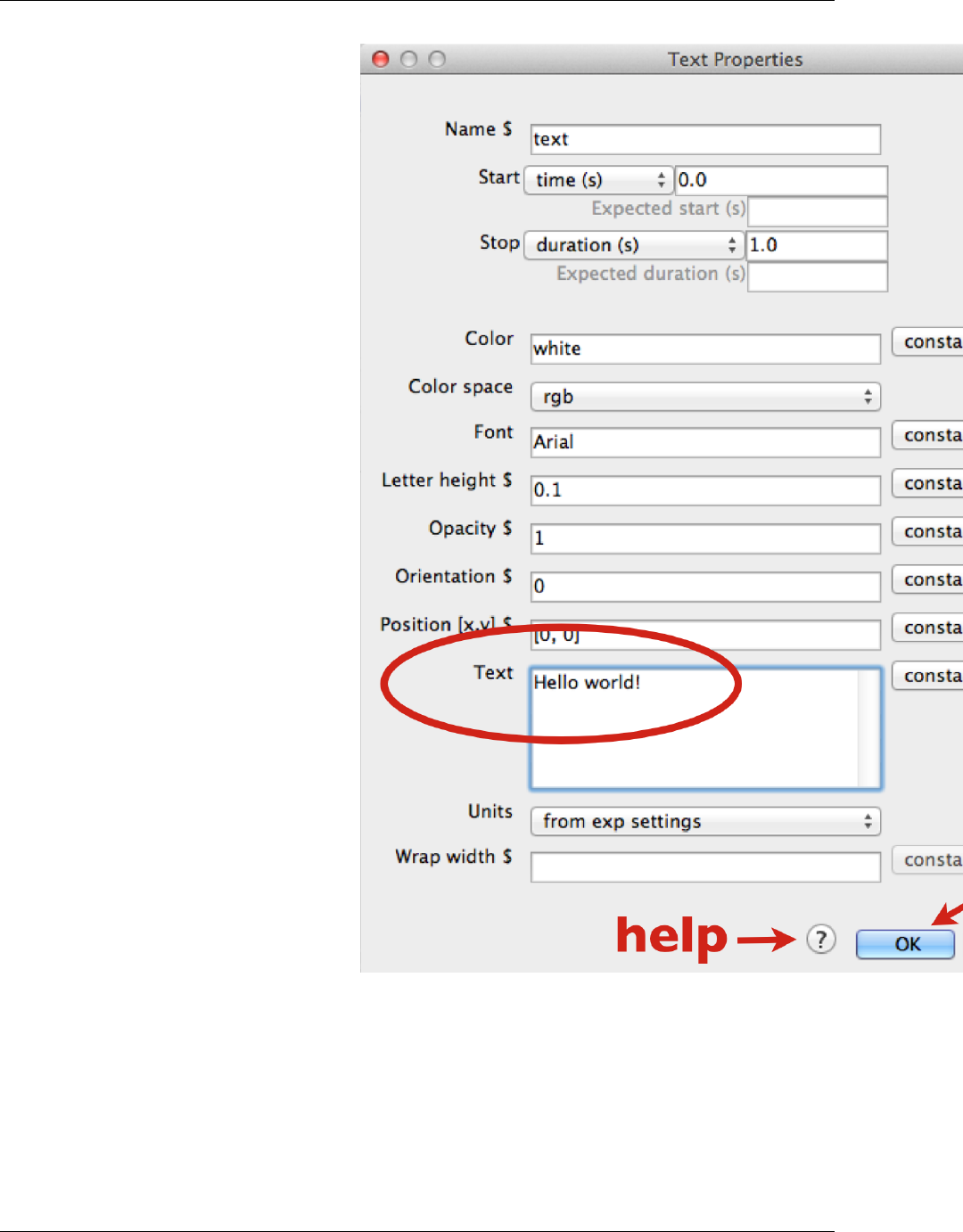

and a Text Properties dialog will pop up.

• In the Text field, replace the default text with your message. When you run the program, the text you type here

will be shown on the screen.

• Click OK (near the botton of the dialog box). (Properties dialogs have a link to online help—an icon at the

bottom, near the OK button.)

• Your text component now resides in a routine called trial. You can click on it to view or edit it. (Components,

Routines, and other Builder concepts are explained in the Builder documentation.)

•Back in the main Builder, type Ctrl-R (Windows, Linux) or Cmd-R (Mac), or use the mouse to click the Run icon.

16 Chapter 7. Builder

PsychoPy - Psychology software for Python, Release 1.78.00

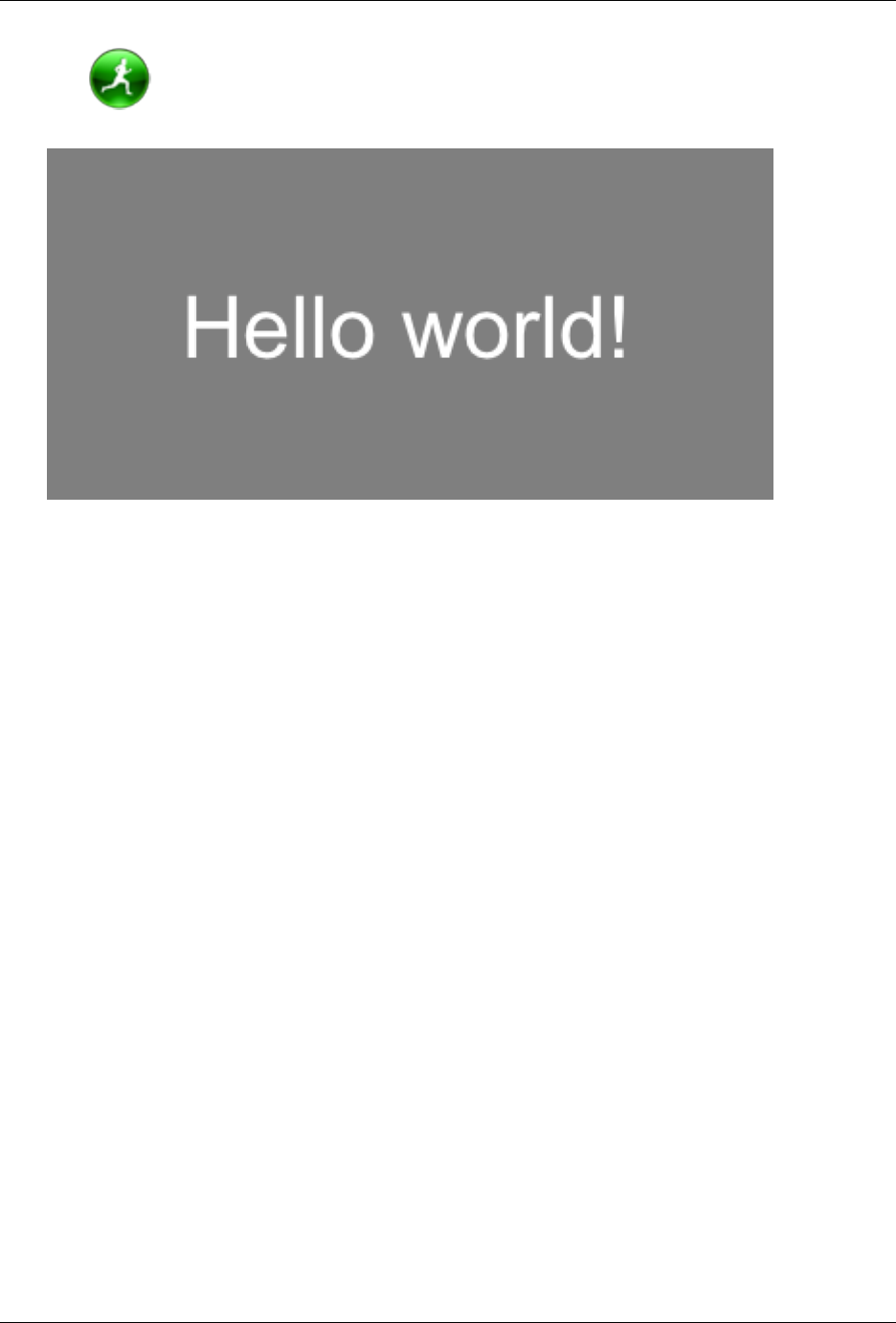

Assuming you typed in “Hello world!”, your screen should have looked this this (briefly):

If nothing happens or it looks wrong, recheck all the steps above; be sure to start from a new Builder view.

What if you wanted to display your cheerful greeting for longer than the default time?

• Click on your Text component (the existing one, not a new one).

• Edit the Stop duration (s) to be 3.2; times are in seconds.

• Click OK.

• And finally Run.

When running an experiment, you can quit by pressing the escape key (this can be configured or disabled). You can

quit PsychoPy from the File menu, or typing Ctrl-Q /Cmd-Q.

7.2 Getting beyond Hello

To do more, you can try things out and see what happens. You may want to consult the Builder documentation. Many

people find it helpful to explore the Builder demos, in part to see what is possible, and especially to see how different

things are done.

A good way to develop your own first PsychoPy experiment is to base it on the Builder demo that seems closest. Copy

it, and then adapt it step by step to become more and more like the program you have in mind. Being familiar with the

Builder demos can only help this process.

You could stop here, and just use the Builder for creating your experiments. It provides a lot of the key features that

people need to run a wide variety of studies. But it does have its limitations. When you want to have more complex

designs or features, you’ll want to investigate the Coder. As a segue to the Coder, lets start from the Builder, and see

how Builder programs work.

7.2. Getting beyond Hello 17

PsychoPy - Psychology software for Python, Release 1.78.00

18 Chapter 7. Builder

CHAPTER

EIGHT

BUILDER-TO-CODER

Whenever you run a Builder experiment, PsychoPy will first translate it into python code, and then execute that code.

To get a better feel for what was happening “behind the scenes” in the Builder program above:

• In the Builder, load or recreate your “hello world” program.

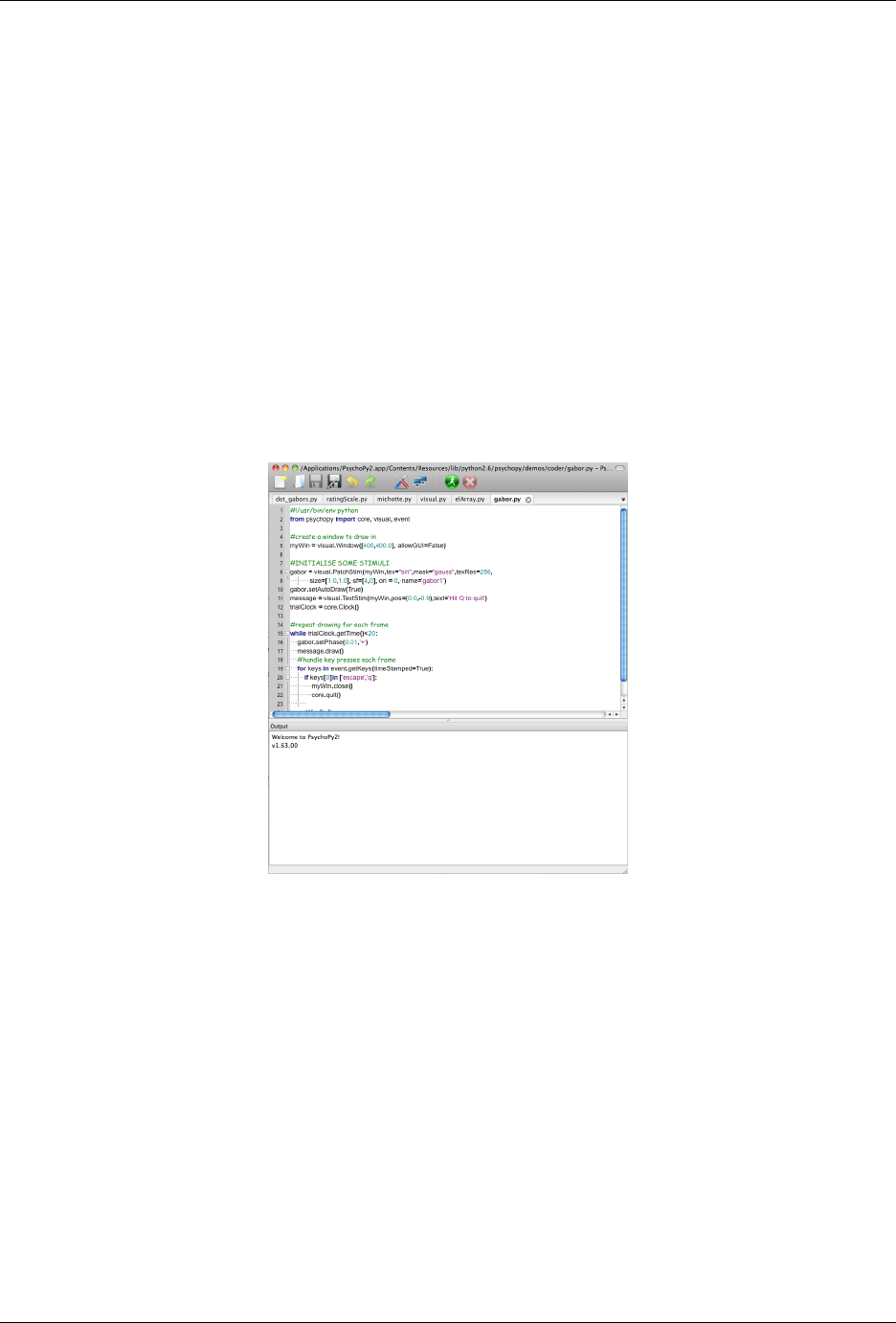

•Instead of running the program, explicitly convert it into python: Type F5, or click the Compile icon:

The view will automatically switch to the Coder, and display the python code. If you then save and run this code, it

would look the same as running it directly from the Builder.

It is always possible to go from the Builder to python code in this way. You can then edit that code and run it as a

python program. However, you cannot go from code back to a Builder representation.

To switch quickly between Builder and Coder views, you can type Ctrl-L /Cmd-L.

19

PsychoPy - Psychology software for Python, Release 1.78.00

20 Chapter 8. Builder-to-coder

CHAPTER

NINE

CODER

Being able to inspect Builder-generated code is nice, but its possible to write code yourself, directly. With the Coder

and various libraries, you can do virtually anything that your computer is capable of doing, using a full-featured

modern programming language (python).

For variety, lets say hello to the Spanish-speaking world. PsychoPy knows Unicode (UTF-8).

If you are not in the Coder, switch to it now.

• Start a new code document: Ctrl-N /Cmd-N.

• Type (or copy & paste) the following:

from psychopy import visual, core

win =visual.Window()

msg =visual.TextStim(win, text=u"\u00A1Hola mundo!")

msg.draw()

win.flip()

core.wait(1)

win.close()

• Save the file (the same way as in Builder).

• Run the script.

Note that the same events happen on-screen with this code version, despite the code being much simpler than the code

generated by the Builder. (The Builder actually does more, such as prompt for a subject number.)

Coder Shell

The shell provides an interactive python interpreter, which means you can enter commands here to try them out. This

provides yet another way to send your salutations to the world. By default, the Coder’s output window is shown at the

bottom of the Coder window. Click on the Shell tab, and you should see python’s interactive prompt, >>>:

PyShell in PsychoPy - type some commands!

Type "help", "copyright", "credits" or "license" for more information.

>>>

At the prompt, type:

>>> print u"\u00A1Hola mundo!"

You can do more complex things, such as type in each line from the Coder example directly into the Shell window,

doing so line by line:

21

PsychoPy - Psychology software for Python, Release 1.78.00

>>> from psychopy import visual, core

and then:

>>> win =visual.Window()

and so on—watch what happens each line::

>>> msg =visual.TextStim(win, text=u"\u00A1Hola mundo!")

>>> msg.draw()

>>> win.flip()

and so on. This lets you try things out and see what happens line-by-line (which is how python goes through your

program).

22 Chapter 9. Coder

CHAPTER

TEN

GENERAL ISSUES

These are issues that users should be aware of, whether they are using Builder or Coder views.

10.1 Monitor Center

PsychoPy provides a simple and intuitive way for you to calibrate your monitor and provide other information about

it and then import that information into your experiment.

Information is inserted in the Monitor Center (Tools menu), which allows you to store information about multiple

monitors and keep track of multiple calibrations for the same monitor.

For experiments written in the Builder view, you can then import this information by simply specifying the name of

the monitor that you wish to use in the Experiment settings dialog. For experiments created as scripts you can retrieve

the information when creating the Window by simply naming the monitor that you created in Monitor Center. e.g.:

from psychopy import visual

win =visual.Window([1024,768], mon=’SonyG500’)

Of course, the name of the monitor in the script needs to match perfectly the name given in the Monitor Center.

10.1.1 Real world units

One of the particular features of PsychoPy is that you can specify the size and location of stimuli in units that are

independent of your particular setup, such as degrees of visual angle (see Units for the window and stimuli). In order

for this to be possible you need to inform PsychoPy of some characteristics of your monitor. Your choice of units

determines the information you need to provide:

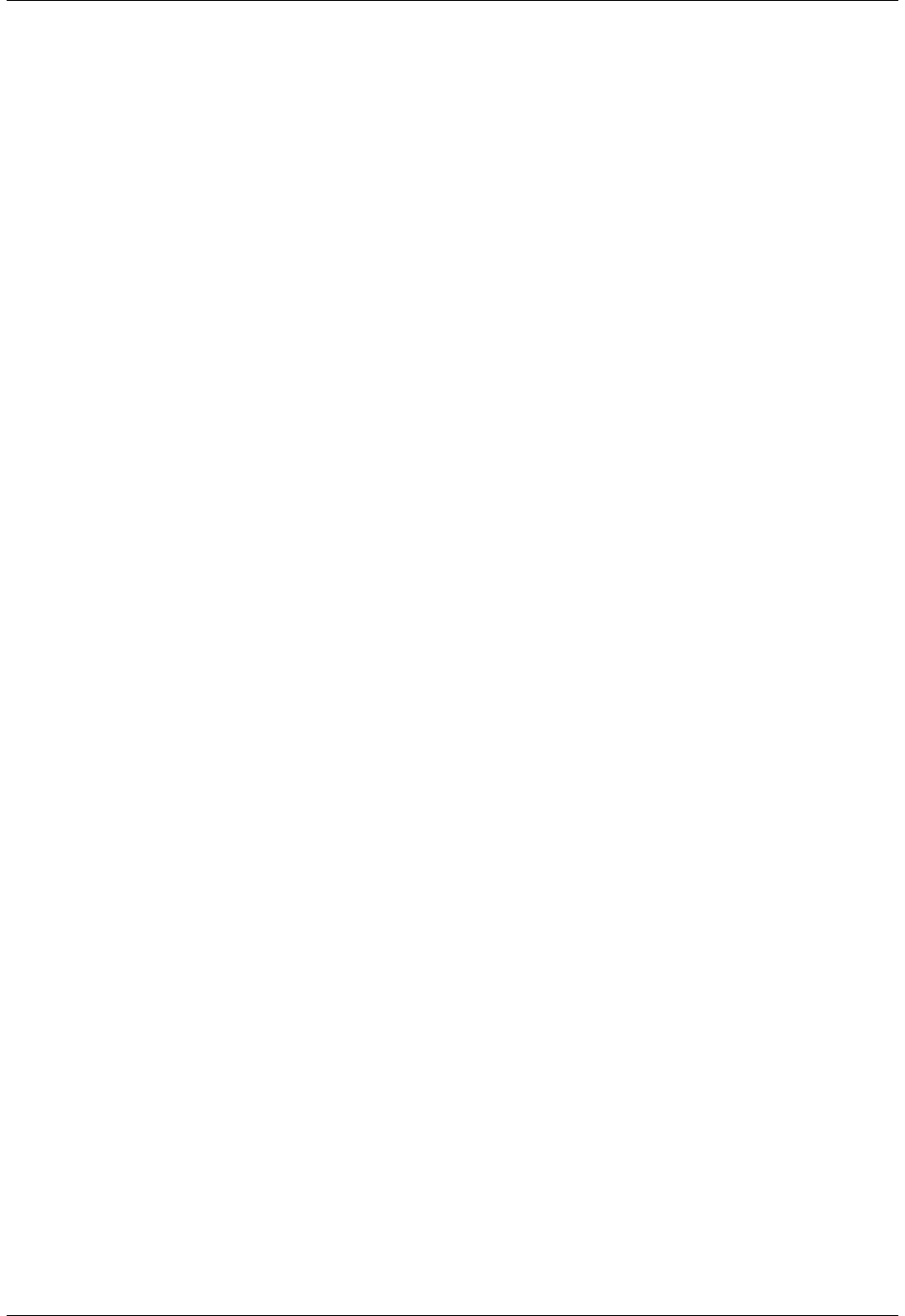

Units Requires

‘norm’ (normalised to widht/height) n/a

‘pix’ (pixels) Screen width in pixels

‘cm’ (centimeters on the screen) Screen width in pixels and screen width in cm

‘deg’ (degrees of visual angle) Screen width (pixels), screen width (cm) and distance (cm)

10.1.2 Calibrating your monitor

PsychoPy can also store and use information about the gamma correction required for your monitor. If you have

a Spectrascan PR650 (other devices will hopefully be added) you can perform an automated calibration in which

PsychoPy will measure the necessary gamma value to be applied to your monitor. Alternatively this can be added

manually into the grid to the right of the Monitor Center. To run a calibration, connect the PR650 via the serial port

and, immediately after turning it on press the Find PR650 button in the Monitor Center.

23

PsychoPy - Psychology software for Python, Release 1.78.00

Note that, if you don’t have a photometer to hand then there is a method for determining the necessary gamma value

psychophysically included in PsychoPy (see gammaMotionNull and gammaMotionAnalysis in the demos menu).

The two additional tables in the Calibration box of the Monitor Center provide conversion from DKL and LMS colour

spaces to RGB.

10.2 Units for the window and stimuli

One of the key advantages of PsychoPy over many other experiment-building software packages is that stimuli can be

described in a wide variety of real-world, device-independent units. In most other systems you provide the stimuli at

a fixed size and location in pixels, or percentage of the screen, and then have to calculate how many cm or degrees of

visual angle that was.

In PsychoPy, after providing information about your monitor, via the Monitor Center, you can simply specify your

stimulus in the unit of your choice and allow PsychoPy to calculate the appropriate pixel size for you.

Your choice of unit depends on the circumstances. For conducting demos, the two normalised units (‘norm’ and

‘height’) are often handy because the stimulus scales naturally with the window size. For running an experiment it’s

usually best to use something like ‘cm’ or ‘deg’ so that the stimulus is a fixed size irrespective of the monitor/window.

For all units, the centre of the screen is represented by coordinates (0,0), negative values mean down/left, positive

values mean up/right.

10.2.1 Height units

With ‘height’ units everything is specified relative to the height of the window (note the window, not the screen).

As a result, the dimensions of a screen with standard 4:3 aspect ratio will range (-0.6667,-0.5) in the bottom left to

(+0.6667,+0.5) in the top right. For a standard widescreen (16:10 aspect ratio) the bottom left of the screen is (-0.8,-

0.5) and top-right is (+0.8,+0.5). This type of unit can be useful in that it scales with window size, unlike Degrees of

visual angle or Centimeters on screen, but stimuli remain square, unlike Normalised units units. Obviously it has the

disadvantage that the location of the right and left edges of the screen have to be determined from a knowledge of the

screen dimensions. (These can be determined at any point by the Window.size attribute.)

Spatial frequency: cycles per stimulus (so will scale with the size of the stimulus).

Requires : No monitor information

10.2.2 Normalised units

In normalised (‘norm’) units the window ranges in both x and y from -1 to +1. That is, the top right of the window

has coordinates (1,1), the bottom left is (-1,-1). Note that, in this scheme, setting the height of the stimulus to be 1.0,

will make it half the height of the window, not the full height (because the window has a total height of 1:-1 = 2!).

Also note that specifying the width and height to be equal will not result in a square stimulus if your window is not

square - the image will have the same aspect ratio as your window. e.g. on a 1024x768 window the size=(0.75,1) will

be square.

Spatial frequency: cycles per stimulus (so will scale with the size of the stimulus).

Requires : No monitor information

10.2.3 Centimeters on screen

Set the size and location of the stimulus in centimeters on the screen.

24 Chapter 10. General issues

PsychoPy - Psychology software for Python, Release 1.78.00

Spatial frequency: cycles per cm

Requires : information about the screen width in cm and size in pixels

Assumes : pixels are square. Can be verified by drawing a stimulus with matching width and height and verifying that

it is in fact square. For a CRT this can be controlled by setting the size of the viewable screen (settings on the monitor

itself).

10.2.4 Degrees of visual angle

Use degrees of visual angle to set the size and location of the stimulus. This is, of course, dependent on the distance

that the participant sits from the screen as well as the screen itself, so make sure that this is controlled, and remember

to change the setting in Monitor Center if the viewing distance changes.

Spatial frequency: cycles per degree

Requires : information about the screen width in cm and pixels and the viewing distance in cm

Assumes : that pixels are square (see above) and that all parts of the screen are a constant distance from the eye

(ie that the screen is curved!). This (clearly incorrect assumption) is common to most studies that report the size of

their stimulus in degrees of visual angle. The resulting error is small at moderate eccentricities (a 0.2% error in size

calculation at 3 deg eccentricity) but grows as stimuli are placed further from the centre of the screen (a 2% error at 10

deg). For studies of peripheral vision this should be corrected for. PsychoPy also makes no correction for the thickness

of the screen glass, which refracts the image slightly.

10.2.5 Pixels on screen

You can also specify the size and location of your stimulus in pixels. Obviously this has the disadvantage that sizes

are specific to your monitor (because all monitors differ in pixel size).

Spatial frequency: ‘cycles per pixel‘ (this catches people out but is used to be in keeping with the other units.

If using pixels as your units you probably want a spatial frequency in the range 0.2-0.001 (i.e. from 1 cycle every 5

pixels to one every 100 pixels).

Requires : information about the size of the screen (not window) in pixels, although this can often be deduce from the

operating system if it has been set correctly there.

Assumes: nothing

10.3 Color spaces

The color of stimuli can be specified when creating a stimulus and when using setColor() in a variety of ways. There

are three basic color spaces that PsychoPy can use, RGB, DKL and LMS but colors can also be specified by a name

(e.g. ‘DarkSalmon’) or by a hexadecimal string (e.g. ‘#00FF00’).

examples:

stim =visual.PatchStim(win, color=[1,-1,-1], colorSpace=’rgb’)#will be red

stim.setColor(’Firebrick’)#one of the web/X11 color names

stim.setColor(’#FFFAF0’)#an off-white

stim.setColor([0,90,1], colorSpace=’dkl’)#modulate along S-cone axis in isoluminant plane

stim.setColor([1,0,0], colorSpace=’lms’)#modulate only on the L cone

stim.setColor([1,1,1], colorSpace=’rgb’)#all guns to max

stim.setColor([1,0,0])#this is ambiguous - you need to specify a color space

10.3. Color spaces 25

PsychoPy - Psychology software for Python, Release 1.78.00

10.3.1 Colors by name

Any of the web/X11 color names can be used to specify a color. These are then converted into RGB space by PsychoPy.

These are not case sensitive, but should not include any spaces.

10.3.2 Colors by hex value

This is really just another way of specifying the r,g,b values of a color, where each gun’s value is given by two

hexadecimal characters. For some examples see this chart. To use these in PsychoPy they should be formatted as a

string, beginning with #and with no spaces. (NB on a British Mac keyboard the # key is hidden - you need to press

Alt-3)

10.3.3 RGB color space

This is the simplest color space, in which colors are represented by a triplet of values that specify the red green and

blue intensities. These three values each range between -1 and 1.

Examples:

• [1,1,1] is white

• [0,0,0] is grey

• [-1,-1,-1] is black

• [1.0,-1,-1] is red

• [1.0,0.6,0.6] is pink

The reason that these colors are expressed ranging between 1 and -1 (rather than 0:1 or 0:255) is that many experiments,

particularly in visual science where PsychoPy has its roots, express colors as deviations from a grey screen. Under

that scheme a value of -1 is the maximum decrement from grey and +1 is the maximum increment above grey.

Note that Psychopy will use your monitor calibration to linearize this for each gun. E.g., 0 will be halfway between

the minimum luminance and maximum luminance for each gun, if your monitor gammaGrid is set correctly.

10.3.4 HSV color space

Another way to specify colors is in terms of their Hue, Saturation and ‘Value’ (HSV). For a description of the color

space see the Wikipedia HSV entry. The Hue in this case is specified in degrees, the saturation ranging 0:1 and the

‘value’ also ranging 0:1.

Examples:

• [0,1,1] is red

• [0,0.5,1] is pink

• [90,1,1] is cyan

• [anything, 0, 1] is white

• [anything, 0, 0.5] is grey

• [anything, anything,0] is black

Note that colors specified in this space (like in RGB space) are not going to be the same another monitor; they are

device-specific. They simply specify the intensity of the 3 primaries of your monitor, but these differ between monitors.

As with the RGB space gamma correction is automatically applied if available.

26 Chapter 10. General issues

PsychoPy - Psychology software for Python, Release 1.78.00

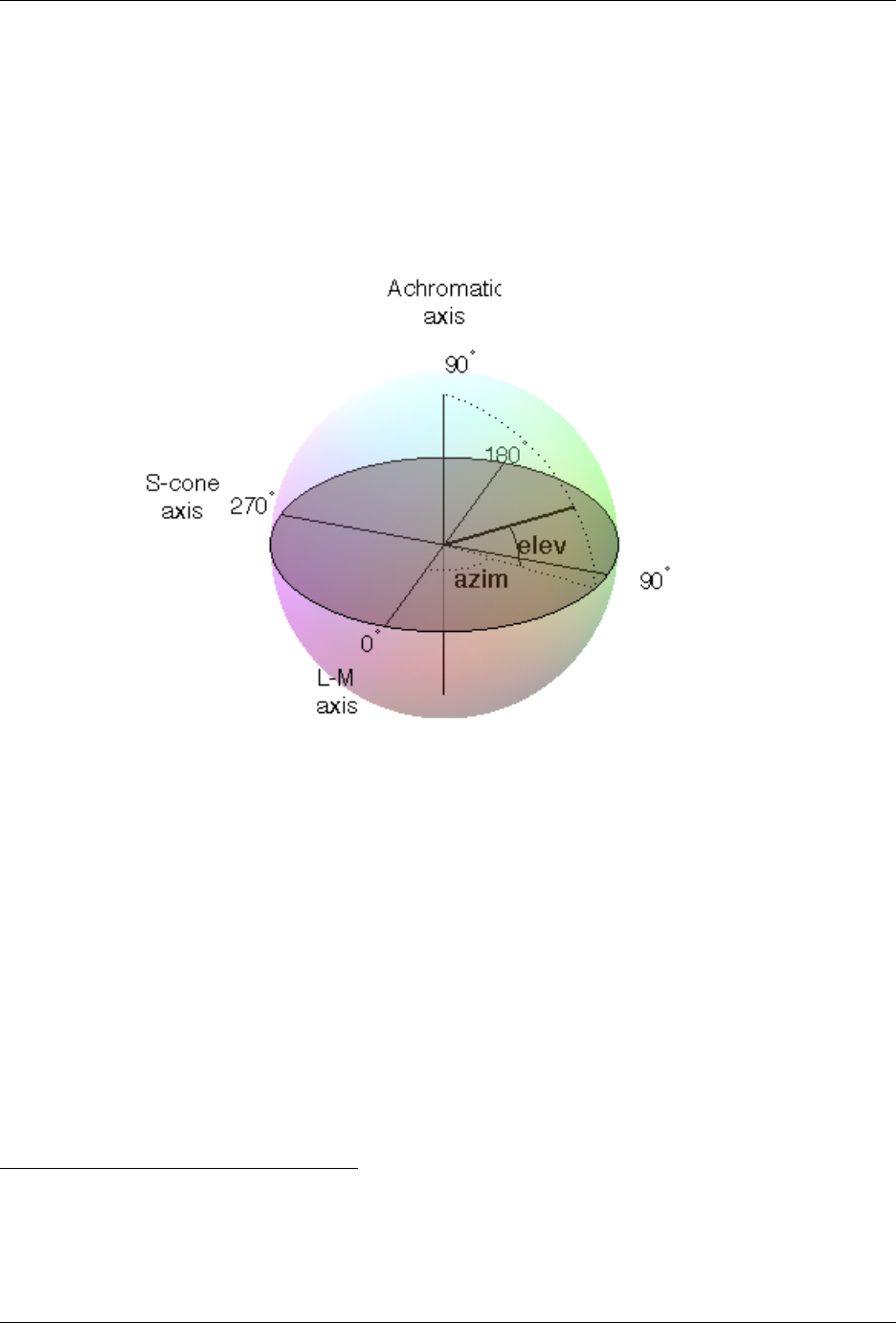

10.3.5 DKL color space

To use DKL color space the monitor should be calibrated with an appropriate spectrophotometer, such as a PR650.

In the Derrington, Krauskopf and Lennie 1color space (based on the Macleod and Boynton 2chromaticity diagram)

colors are represented in a 3-dimensional space using spherical coordinates that specify the elevation from the isolu-

minant plane, the azimuth (the hue) and the contrast (as a fraction of the maximal modulations along the cardinal axes

of the space).

In PsychoPy these values are specified in units of degrees for elevation and azimuth and as a float (ranging -1:1) for

the contrast.

Note that not all colours that can be specified in DKL colour space can be reproduced on a monitor. Here is a movie

plotting in DKL space (showing cartesian coordinates, not spherical coordinates) the gamut of colors available on an

example CRT monitor.

Examples:

• [90,0,1] is white (maximum elevation aligns the color with the luminance axis)

• [0,0,1] is an isoluminant stimulus, with azimuth 0 (S-axis)

• [0,45,1] is an isoluminant stimulus,with an oblique azimuth

1Derrington, A.M., Krauskopf, J., & Lennie, P. (1984). Chromatic Mechanisms in Lateral Geniculate Nucleus of Macaque. Journal of Physiol-

ogy, 357, 241-265.

2MacLeod, D. I. A. & Boynton, R. M. (1979). Chromaticity diagram showing cone excitation by stimuli of equal luminance. Journal of the

Optical Society of America, 69(8), 1183-1186.

10.3. Color spaces 27

PsychoPy - Psychology software for Python, Release 1.78.00

10.3.6 LMS color space

To use LMS color space the monitor should be calibrated with an appropriate spectrophotometer, such as a PR650.

In this color space you can specify the relative strength of stimulation desired for each cone independently, each with

a value from -1:1. This is particularly useful for experiments that need to generate cone isolating stimuli (for which

modulation is only affecting a single cone type).

10.4 Preferences

10.4.1 General settings

winType: PsychoPy can use one of two ‘backends’ for creating windows and drawing; pygame and pyglet. Here you

can set the default backend to be used.

units: Default units for windows and visual stimuli (‘deg’, ‘norm’, ‘cm’, ‘pix’). See Units for the window and stimuli.

Can be overidden by individual experiments.

fullscr: Should windows be created full screen by default? Can be overidden by individual experiments.

allowGUI: When the window is created, should the frame of the window and the mouse pointer be visible. If set to

False then both will be hidden.

10.4.2 Application settings

These settings are common to all components of the application (Coder and Builder etc)

largeIcons: Do you want large icons (on some versions of wx on OS X this has no effect)

defaultView: Determines which view(s) open when the PsychoPy app starts up. Default is ‘last’, which fetches the

same views as were open when PsychoPy last closed.

runScripts: Don’t ask. ;-) Just leave this option as ‘process’ for now!

allowModuleImports (only used by win32): Allow modules to be imported at startup for analysis by source assis-

tant. This will cause startup to be slightly slower but will speedup the first analysis of a script.

10.4.3 Coder settings

outputFont: a list of font names to be used in the output panel. The first found on the system will be used

fontSize (in pts): an integer between 6 and 24 that specifies the size of fonts

codeFontSize = integer(6,24, default=12)

outputFontSize = integer(6,24, default=12)

showSourceAsst: Do you want to show the source assistant panel (to the right of the Coder view)? On windows this

provides help about the current function if it can be found. On OS X the source assistant is of limitted use and

is disabled by default.

analysisLevel: If using the source assistant, how much depth should PsychoPy try to analyse the current script? Lower

values may reduce the amount of analysis performed and make the Coder view more responsive (particularly

for files that import many modules and sub-modules).

analyseAuto: If using the source assistant, should PsychoPy try to analyse the current script on every save/load of the

file? The code can be analysed manually from the tools menu

28 Chapter 10. General issues

PsychoPy - Psychology software for Python, Release 1.78.00

showOutput: Show the output panel in the Coder view. If shown all python output from the session will be output

to this panel. Otherwise it will be directed to the original location (typically the terminal window that called

PsychoPy application to open).

reloadPrevFiles: Should PsychoPy fetch the files that you previously had open when it launches?

10.4.4 Builder settings

reloadPrevExp (default=False): for the user to add custom components (comma-separated list)

componentsFolders: a list of folder pathnames that can hold additional custom components for the Builder view

hiddenComponents: a list of components to hide (eg, because you never use them)

10.4.5 Connection settings

proxy: The proxy server used to connect to the internet if needed. Must be of the form http://111.222.333.444:5555

autoProxy: PsychoPy should try to deduce the proxy automatically (if this is True and autoProxy is successful then

the above field should contain a valid proxy address).

allowUsageStats: Allow PsychoPy to ping a website at when the application starts up. Please leave this set to True.

The info sent is simply a string that gives the date, PsychoPy version and platform info. There is no cost to

you: no data is sent that could identify you and PsychoPy will not be delayed in starting as a result. The aim

is simple: if we can show that lots of people are using PsychoPy there is a greater chance of it being improved

faster in the future.

checkForUpdates: PsychoPy can (hopefully) automatically fetch and install updates. This will only work for minor

updates and is still in a very experimental state (as of v1.51.00).

10.4.6 Key bindings

There are many shortcut keys that you can use in PsychoPy. For instance did you realise that you can indent or outdent

a block of code with Ctrl-[ and Ctrl-] ?

10.5 Data outputs

There are a number of different forms of output that PsychoPy can generate, depending on the study and your preferred

analysis software. Multiple file types can be output from a single experiment (e.g. Excel data file for a quick browse,

Log file to check for error mesages and PsychoPy data file (.psydat) for detailed analysis)

10.5.1 Log file

Log files are actually rather difficult to use for data analysis but provide a chronological record of everything that

happened during your study. The level of content in them depends on you. See Logging data for further information.

10.5.2 PsychoPy data file (.psydat)

This is actually a TrialHandler or StairHandler object that has been saved to disk with the python cPickle

module.

10.5. Data outputs 29

PsychoPy - Psychology software for Python, Release 1.78.00

These files are designed to be used by experienced users with previous experience of python and, probably, matplotlib.

The contents of the file can be explored with dir(), as any other python object.

These files are ideal for batch analysis with a python script and plotting via matplotlib. They contain more information

than the Excel or csv data files, and can even be used to (re)create those files.

Of particular interest might be the attributes of the Handler:

extraInfo the extraInfo dictionary provided to the Handler during its creation

trialList the list of dictionaries provided to the Handler during its creation

data a dictionary of 2D numpy arrays. Each entry in the dictionary represents a type of data (e.g.

if you added ‘rt’ data during your experiment using ~psychopy.data.TrialHandler.addData then

‘rt’ will be a key). For each of those entries the 2D array represents the condition number and

repeat number (remember that these start at 0 in python, unlike matlab(TM) which starts at 1)

For example, to open a psydat file and examine some of its contents with:

from psychopy.misc import fromFile

datFile =fromFile(’fileName.psydat’)

#get info (added when the handler was created)

print datFile.extraInfo

#get data

print datFile.data

#get list of conditions

conditions =datFile.trialList

for condN, condition in enumerate(conditions):

print condition, datFile.data[’response’][condN], numpy.mean(datFile.data[’response’][condN])

Ideally, we should provide a demo script here for fetching and plotting some data feel (free to contribute).

10.5.3 Long-wide data file

This form of data file is the default data output from Builder experiments as of v1.74.00. Rather than summarising

data in a spreadsheet where one row represents all the data from a single condition (as in the summarised data format),

in long-wide data files the data is not collapsed by condition, but written chronologically with one row representing

one trial (hence it is typically longer than summarised data files). One column in this format is used for every single

piece of information available in the experiment, even where that information might be considered redundant (hence

the format is also ‘wide’).

Although these data files might not be quite as easy to read quickly by the experimenter, they are ideal for import and

analysis under packages such as R, SPSS or Matlab.

10.5.4 Excel data file

Excel 2007 files (.xlsx) are a useful and flexible way to output data as a spreadsheet. The file format is open and

supported by nearly all spreadsheet applications (including older versions of Excel and also OpenOffice). N.B. because

.xlsx files are widely supported, the older Excel file format (.xls) is not likely to be supported by PsychoPy unless a

user contributes the code to the project.

Data from PsychoPy are output as a table, with a header row. Each row represents one condition (trial type) as given

to the TrialHandler. Each column represents a different type of data as given in the header. For some data, where

there are multiple columns for a single entry in the header. This indicates multiple trials. For example, with a standard

data file in which response time has been collected as ‘rt’ there will be a heading rt_raw with several columns, one

for each trial that occured for the various trial types, and also an rt_mean heading with just a single column giving the

mean reaction time for each condition.

30 Chapter 10. General issues

PsychoPy - Psychology software for Python, Release 1.78.00

If you’re creating experiments by writing scripts then you can specify the sheet name as well as file name for Excel file

outputs. This way you can store multiple sessions for a single subject (use the subject as the filename and a date-stamp

as the sheetname) or a single file for multiple subjects (give the experiment name as the filename and the participant

as the sheetname).

Builder experiments use the participant name as the file name and then create a sheet in the Excel file for each loop of

the experiment. e.g. you could have a set of practice trials in a loop, followed by a set of main trials, and these would

each receive their own sheet in the data file.

10.5.5 Delimited text files (.dlm, .csv)

For maximum compatibility, especially for legacy analysis software, you can choose to output your data as a delimitted

text file. Typically this would be comma-separated values (.csv file) or tab-delimited (.dlm file). The format of those

files is exactly the same as the Excel file, but is limited by the file format to a single sheet.

10.6 Gamma correcting a monitor

Monitors typically don’t have linear outputs; when you request luminance level of 127, it is not exactly half the

luminance of value 254. For experiments that require the luminance values to be linear, a correction needs to be put

in place for this nonlinearity which typically involves fitting a power law or gamma (γ) function to the monitor output

values. This process is often referred to as gamma correction.

PsychoPy can help you perform gamma correction on your monitor, especially if you have one of the supported

photometers/spectroradiometers.

There are various different equations with which to perform gamma correction. The simple equation (10.1) is assumed

by most hardware manufacturers and gives a reasonable first approximation to a linear correction. The full gamma

correction equation (10.3) is more general, and likely more accurate especially where the lowest luminance value of the

monitor is bright, but also requires more information. It can only be used in labs that do have access to a photometer

or similar device.

10.6.1 Simple gamma correction

The simple form of correction (as used by most hardware and software) is this:

L(V) = a+kV γ(10.1)

where Lis the final luminance value, Vis the requested intensity (ranging 0 to 1), a,kand γare constants for the

monitor.

This equation assumes that the luminance where the monitor is set to ‘black’ (V=0) comes entirely from the surround

and is therefore not subject to the same nonlinearity as the monitor. If the monitor itself contributes significantly to a

then the function may not fit very well and the correction will be poor.

The advantage of this function is that the calibrating system (PsychoPy in this case) does not need to know anything

more about the monitor than the gamma value itself (for each gun). For the full gamma equation (10.3), the system

needs to know about several additional variables. The look-up table (LUT) values required to give a (roughly) linear

luminance output can be generated by:

LUT (V) = V1/γ (10.2)

where Vis the entry in the LUT, between 0 (black) and 1 (white).

10.6. Gamma correcting a monitor 31

PsychoPy - Psychology software for Python, Release 1.78.00

10.6.2 Full gamma correction

For very accurate gamma correction PsychoPy uses a more general form of the equation above, which can separate

the contribution of the monitor and the background to the lowest luminance level:

L(V) = a+ (b+kV )γ(10.3)

This equation makes no assumption about the origin of the base luminance value, but requires that the system knows

the values of band kas well as γ.

The inverse values, required to build the LUT are found by:

LUT (V) = ((1 −V)bγ+V(b+k)γ)1/γ −b

k(10.4)

This is derived below, for the interested reader. ;-)

And the associated luminance values for each point in the LUT are given by:

L(V) = a+ (1 −V)bγ+V(b+k)γ

10.6.3 Deriving the inverse full equation

The difficulty with the full gamma equation (10.3) is that the presence of the bvalue complicates the issue of calculating

the inverse values for the LUT. The simple inverse of (10.3) as a function of output luminance values is:

LUT (L) = ((L−a)1/γ −b)

k(10.5)

To use this equation we need to first calculate the linear set of luminance values, L, that we are able to produce the

current monitor and lighting conditions and then deduce the LUT value needed to generate that luminance value.

We need to insert into the LUT the values between 0 and 1 (to use the maximum range) that map onto the linear range

from the minimum, m, to the maximum Mpossible luminance. From the parameters in (10.3) it is clear that:

m=a+bγ(10.6)

M=a+ (b+k)γ

Thus, the luminance value, Lat any given point in the LUT, V, is given by

L(V) = m+ (M−m)V

=a+bγ+ (a+ (b+k)γ−a−bγ)V

=a+bγ+ ((b+k)γ−bγ)V

=a+ (1 −V)bγ+V(b+k)γ

(10.7)

where Vis the position in the LUT as a fraction.

Now, to generate the LUT as needed we simply take the inverse of (10.3):

LUT (L) = (L−a)1/γ −b

k(10.8)

and substitute our L(V)values from (10.7):

LUT (V) = (a+ (1 −V)bγ+V(b+k)γ−a)1/γ −b

k

=((1 −V)bγ+V(b+k)γ)1/γ −b

k

(10.9)

32 Chapter 10. General issues

PsychoPy - Psychology software for Python, Release 1.78.00

10.6.4 References

10.7 Timing Issues and synchronisation

One of the key requirements of experimental control software is that it has good temporal precision. PsychoPy aims to

be as precise as possible in this domain and can achieve excellent results depending on your experiment and hardware.

It also provides you with a precise log file of your experiment to allow you to check the precision with which things

occurred. Some general considerations are discussed here and there are links with Specific considerations for specific

designs.

Something that people seem to forget (not helped by the software manufacturers that keep talking about their sub-

millisecond precision) is that the monitor, keyboard and human participant DO NOT have anything like this sort of

precision. Your monitor updates every 10-20ms depending on frame rate. If you use a CRT screen then the top is

drawn before the bottom of the screen by several ms. If you use an LCD screen the whole screen can take around

20ms to switch from one image to the next. Your keyboard has a latency of 4-30ms, depending on brand and system.

So, yes, PsychoPy’s temporal precision is as good as most other equivalent applications, for instance the duration for

which stimuli are presented can be synchronised precisely to the frame, but the overall accuracy is likely to be severely

limited by your experimental hardware. To get very precise timing of responses etc, you need to use specialised

hardware like button boxes and you need to think carefully about the physics of your monitor.

Warning: The information about timing in PsychoPy assumes that your graphics card is capable of synchronising

with the monitor frame rate. For integrated Intel graphics chips (e.g. GMA 945) under Windows, this is not true

and the use of those chips is not recommended for serious experimental use as a result. Desktop systems can have

a moderate graphics card added for around £30 which will be vastly superior in performance.

10.7.1 Specific considerations for specific designs

Non-slip timing for imaging

For most behavioural/psychophysics studies timing is most simply controlled by setting some timer (e.g. a Clock())

to zero and waiting until it has reached a certain value before ending the trial. We might call this a ‘relative’ timing

method, because everything is timed from the start of the trial/epoch. In reality this will cause an overshoot of some

fraction of one screen refresh period (10ms, say). For imaging (EEG/MEG/fMRI) studies adding 10ms to each trial

repeatedly for 10 minutes will become a problem, however. After 100 stimulus presentations your stimulus and scanner

will be de-synchronised by 1 second.

There are two ways to get around this:

1. Time by frames If you are confident that you aren’t dropping frames then you could base your timing on frames

instead to avoid the problem.

2. Non-slip (global) clock timing The other way, which for imaging is probably the most sensible, is to arrange

timing based on a global clock rather than on a relative timing method. At the start of each trial you add the

(known) duration that the trial will last to a global timer and then wait until that timer reaches the necessary

value. To facilitate this, the PsychoPy (e.g. a Clock()) was given a new add() method as of version 1.74.00

and a CountdownTimer() was also added.

The non-slip method can only be used in cases where the trial is of a known duration at its start. It cannot, for example,

be used if the trial ends when the subject makes a response, as would occur in most behavioural studies.

10.7. Timing Issues and synchronisation 33

PsychoPy - Psychology software for Python, Release 1.78.00

Non-slip timing from the Builder

(new feature as of version 1.74.00)

When creating experiments in the Builder, PsychoPy will attempt to identify whether a particular Routine has a known

endpoint in seconds. If so then it will use non-slip timing for this Routine based on a global countdown timer called

routineTimer. Routines that are able to use this non-slip method are shown in green in the Flow, whereas Routines

using relative timing are shown in red. So, if you are using PsychoPy for imaging studies then make sure that all the

Routines within your loop of epochs are showing as green. (Typically your study will also have a Routine at the start

waiting for the first scanner pulse and this will use relative timing, which is appropriate).

Detecting dropped frames

Occasionally you will drop frames if you:

• try to do too much drawing

• do it in an innefficient manner (write poor code)

• have a poor computer/graphics card

Things to avoid:

• recreating textures for stimuli

• building new stimuli from scratch (create them once at the top of your script and then change them using

stim.setOri(ori)(),stim.setPos([x,y]...)

Turn on frame time recording

The key sometimes is knowing if you are dropping frames. PsychoPy can help with that by keeping track of frame

durations. By default, frame time tracking is turned off because many people don’t need it, but it can be turned on any

time after Window creation setRecordFrameIntervals(), e.g.:

from psychopy import visual win = visual.Window([800,600]) win.setRecordFrameIntervals(True)

Since there are often dropped frames just after the system is initialised, it makes sense to start off with a fixation period,

or a ready message and don’t start recording frame times until that has ended. Obviously if you aren’t refreshing the

window at some point (e.g. waiting for a key press with an unchanging screen) then you should turn off the recording

of frame times or it will give spurious results.

Warn me if I drop a frame

The simplest way to check if a frame has been dropped is to get PsychoPy to report a warning if it thinks a frame was

dropped:

from psychopy import visual, logging

win =visual.Window([800,600])

win.setRecordFrameIntervals(True)

win._refreshThreshold=1/85.0+0.004 #i’ve got 85Hz monitor and want to allow 4ms tolerance

#set the log module to report warnings to the std output window (default is errors only)

logging.console.setLevel(logging.WARNING)

34 Chapter 10. General issues

PsychoPy - Psychology software for Python, Release 1.78.00

Show me all the frame times that I recorded

While recording frame times, these are simply appended, every frame to win.frameIntervals (a list). You can simply

plot these at the end of your script using pylab:

import pylab

pylab.plot(win.frameIntervals)

pylab.show()

Or you could save them to disk. A convenience function is provided for this:

win.saveFrameIntervals(fileName=None, clear=True)

The above will save the currently stored frame intervals (using the default filename, ‘lastFrameIntervals.log’) and then

clears the data. The saved file is a simple text file.

At any time you can also retrieve the time of the /last/ frame flip using win.lastFrameT (the time is synchronised with

logging.defaultClock so it will match any logging commands that your script uses).

‘Blocking’ on the VBI

As of version 1.62 PsychoPy ‘blocks’ on the vertical blank interval meaning that, once Window.flip() has been called,

no code will be executed until that flip actually takes place. The timestamp for the above frame interval measure-

ments is taken immediately after the flip occurs. Run the timeByFrames demo in Coder to see the precision of these

measurements on your system. They should be within 1ms of your mean frame interval.

Note that Intel integrated graphics chips (e.g. GMA 945) under win32 do not sync to the screen at all and so blocking

on those machines is not possible.

Reducing dropped frames

There are many things that can affect the speed at which drawing is achieved on your computer. These include, but are

probably not limited to; your graphics card, CPU, operating system, running programs, stimuli, and your code itself.

Of these, the CPU and the OS appear to make rather little difference. To determine whether you are actually dropping

frames see Detecting dropped frames.

Things to change on your system:

1. make sure you have a good graphics card. Avoid integrated graphics chips, especially Intel integrated chips and

especially on laptops (because on these you don’t get to change your mind so easily later). In particular, try to

make sure that you card supports OpenGL 2.0

2. shut down as many programs, including background processes. Although modern processors are fast and often have multiple cores, substantial disk/memory accessing can cause frame drops

• anti-virus auto-updating (if you’re allowed)

• email checking software

• file indexing software

• backup solutions (e.g. TimeMachine)

• Dropbox

• Synchronisation software

10.7. Timing Issues and synchronisation 35

PsychoPy - Psychology software for Python, Release 1.78.00

Writing optimal scripts

1. run in full-screen mode (rather than simply filling the screen with your window). This way the OS doesn’t have

to spend time working out what application is currently getting keyboard/mouse events.

2. don’t generate your stimuli when you need them. Generate them in advance and then just modify them later

with the methods like setContrast(), setOrientation() etc...

3. calls to the following functions are comparatively slow; they require more cpu time than most other functions and then have to send a large amount of data to the graphics card. Try to use these methods in inter-trial intervals. This is especially true when you need to load an image from disk too as the texture.

(a) PatchStim.setTexture()

(b) RadialStim.setTexture()

(c) TextStim.setText()

4. if you don’t have OpenGL 2.0 then calls to setContrast, setRGB and setOpacity will also be slow, because they

also make a call to setTexture(). If you have shader support then this call is not necessary and a large speed

increase will result.

5. avoid loops in your python code (use numpy arrays to do maths with lots of elements)

6. if you need to create a large number (e.g. greater than 10) similar stimuli, then try the ElementArrayStim

Possible good ideas

It isn’t clear that these actually make a difference, but they might).

1. disconnect the internet cable (to prevent programs performing auto-updates?)

2. on Macs you can actually shut down the Finder. It might help. See Alex Holcombe’s page here

3. use a single screen rather than two (probably there is some graphics card overhead in managing double the

number of pixels?)

Comparing Operating Systems under PsychoPy

This is an attempt to quantify the ability of PsychoPy draw without dropping frames on a variety of hardware/software.

The following tests were conducted using the script at the bottom of the page. Note, of course that the hardware fully

differs between the Mac and Linux/Win systems below, but that both are standard off-the-shelf machines.

All of the below tests were conducted with ‘normal’ systems rather than anything that had been specifically optimised:

• the machines were connected to network

• did not have anti-virus turned off (except Ubuntu had no antivirus)

• they even all had dropbox clients running

• linux was the standard (not ‘realtime’ kernel)

No applications were actively being used by the operator while tests were run.

In order to test drawing under a variety of processing loads the test stimulus was one of:

• a single drifting Gabor

• 500 random dots continuously updating

• 750 random dots continuously updating

36 Chapter 10. General issues

PsychoPy - Psychology software for Python, Release 1.78.00

• 1000 random dots continuously updating

Common settings:

• Monitor was a CRT 1024x768 100Hz

• all tests were run in full screen mode with mouse hidden

System Differences:

• the iMac was lower spec than the win/linux box and running across two monitors (necessary in order to

connect to the CRT)

• the win/linux box ran off a single monitor

Each run below gives the number of dropped frames out of a run of 10,000 (2.7 mins at 100Hz).

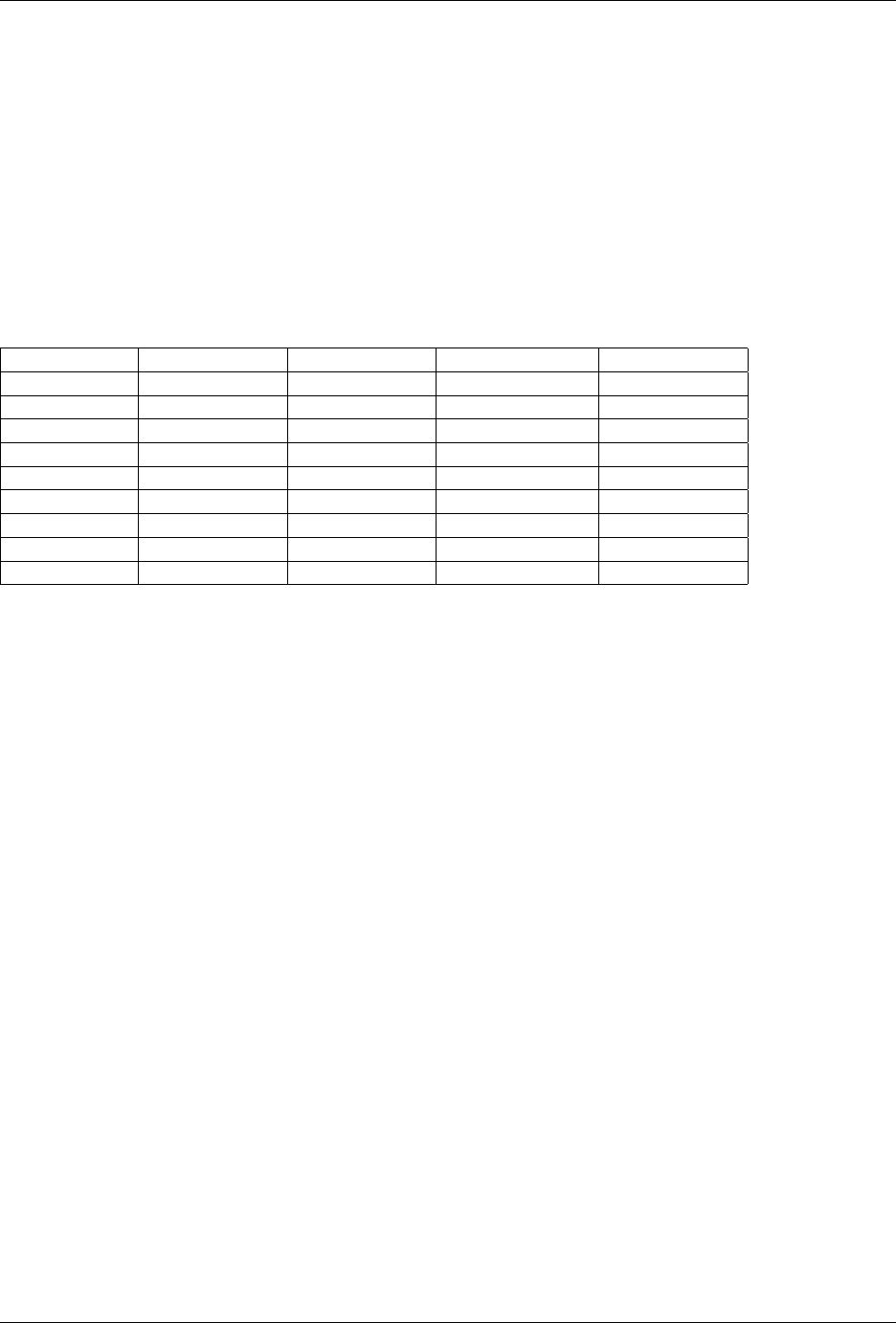

_ Win XP Win Seven Mac OSX 10.6 Ubuntu 11.10

_ (SP3) Enterprise Snow Leopard

Gabor 0 5 0 0

500-dot RDK 0 5 54 3

750-dot RDK 21 7 aborted 1174

1000-dot RDK 776 aborted aborted aborted

GPU Radeon 5400 Radeon 5400 Radeon 2400 Radeon 5400

GPU driver Catalyst 11.11 Catalyst 11.11 Catalyst 11.11

CPU Core Duo 3GHz Core Duo 3GHz Core Duo 2.4GHz Core Duo 3GHz

RAM 4GB 4GB 2GB 4GB

I’ll gradually try to update these tests to include:

• longer runs (one per night!)

• a faster Mac

• a real-time linux kernel

10.7. Timing Issues and synchronisation 37

PsychoPy - Psychology software for Python, Release 1.78.00

38 Chapter 10. General issues

CHAPTER

ELEVEN

BUILDER

Building experiments in a GUI

You can now see a youtube PsychoPy tutorial showing you how to build a simple experiment in the Builder interface

Note: The Builder view is now (at version 1.75) fairly well-developed and should be able to construct a wide variety

of studies. But you should still check carefully that the stimuli and response collection are as expected.

Contents:

39

PsychoPy - Psychology software for Python, Release 1.78.00

11.1 Builder concepts

11.1.1 Routines and Flow

The Builder view of the PsychoPy application is designed to allow the rapid development of a wide range of experi-

ments for experimental psychology and cognitive neuroscience experiments.

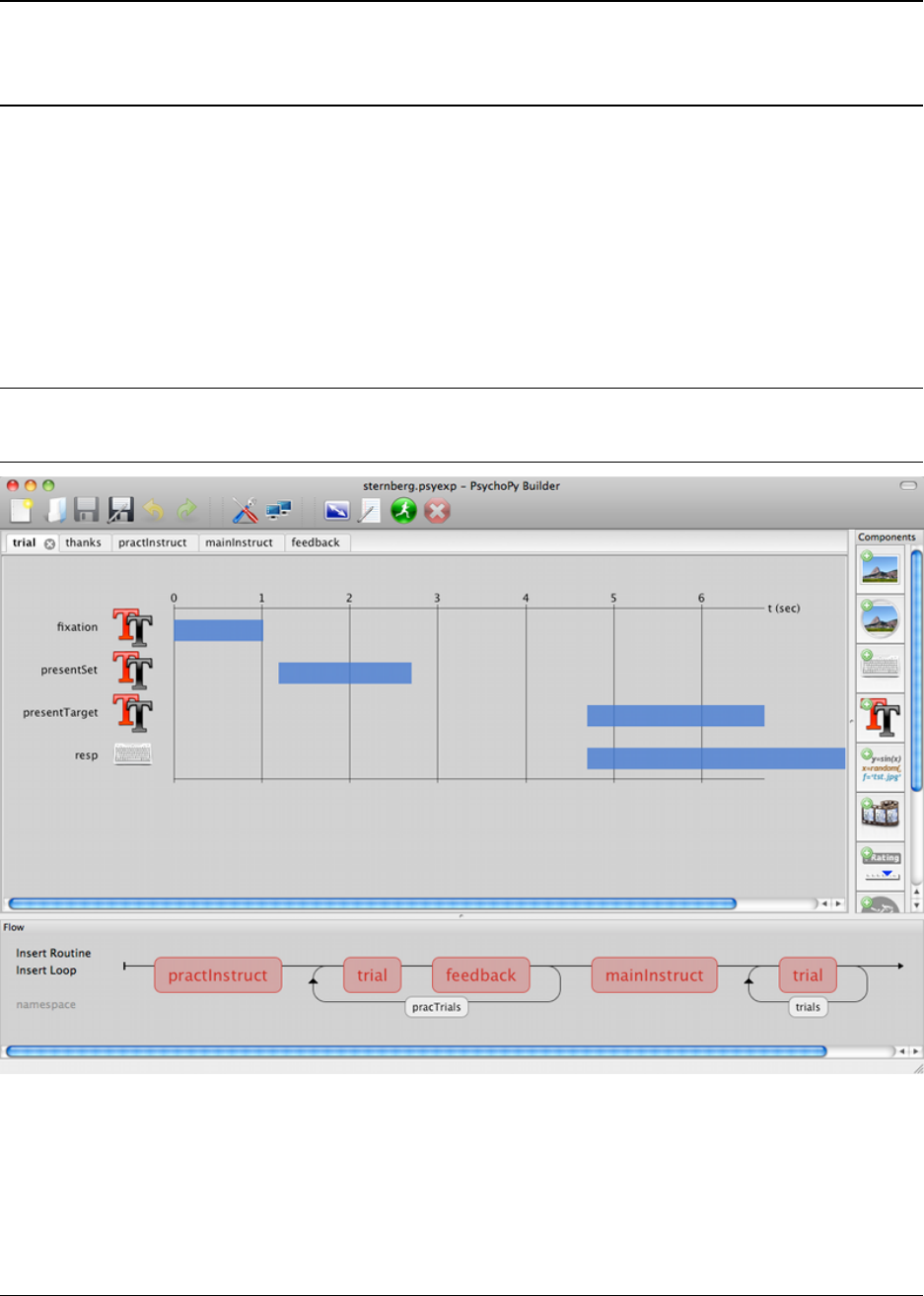

The Builder view comprises two main panels for viewing the experiment’s Routines (upper left) and another for

viewing the Flow (lower part of the window).

An experiment can have any number of Routines, describing the timing of stimuli, instructions and responses. These

are portrayed in a simple track-based view, similar to that of video-editing software, which allows stimuli to come on

go off repeatedly and to overlap with each other.

The way in which these Routines are combined and/or repeated is controlled by the Flow panel. All experiments

have exactly one Flow. This takes the form of a standard flowchart allowing a sequence of routines to occur one after

another, and for loops to be inserted around one or more of the Routines. The loop also controls variables that change

between repetitions, such as stimulus attributes.

11.1.2 Example 1 - a reaction time experiment

For a simple reaction time experiment there might be 3 Routines, one that presents instructions and waits for a keypress,

one that controls the trial timing, and one that thanks the participant at the end. These could then be combined in the

Flow so that the instructions come first, followed by trial, followed by the thanks Routine, and a loop could be inserted

so that the Routine repeated 4 times for each of 6 stimulus intensities.

11.1.3 Example 2 - an fMRI block design

Many fMRI experiments present a sequence of stimuli in a block. For this there are multiple ways to create the

experiment: * We could create a single Routine that contained a number of stimuli and presented them sequentially,