Security Guide Red Hat Enterprise Linux 7

Red%20Hat%20Enterprise%20Linux%207%20Security%20Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 238 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Table of Contents

- Chapter 1. Overview of Security Topics

- Chapter 2. Security Tips for Installation

- Chapter 3. Keeping Your System Up-to-Date

- Chapter 4. Hardening Your System with Tools and Services

- 4.1. Desktop Security

- 4.2. Controlling Root Access

- 4.3. Securing Services

- 4.3.1. Risks To Services

- 4.3.2. Identifying and Configuring Services

- 4.3.3. Insecure Services

- 4.3.4. Securing rpcbind

- 4.3.5. Securing rpc.mountd

- 4.3.6. Securing NIS

- 4.3.7. Securing NFS

- 4.3.8. Securing the Apache HTTP Server

- 4.3.9. Securing FTP

- 4.3.10. Securing Postfix

- 4.3.11. Securing SSH

- 4.3.12. Securing PostgreSQL

- 4.3.13. Securing Docker

- 4.4. Securing Network Access

- 4.5. Using Firewalls

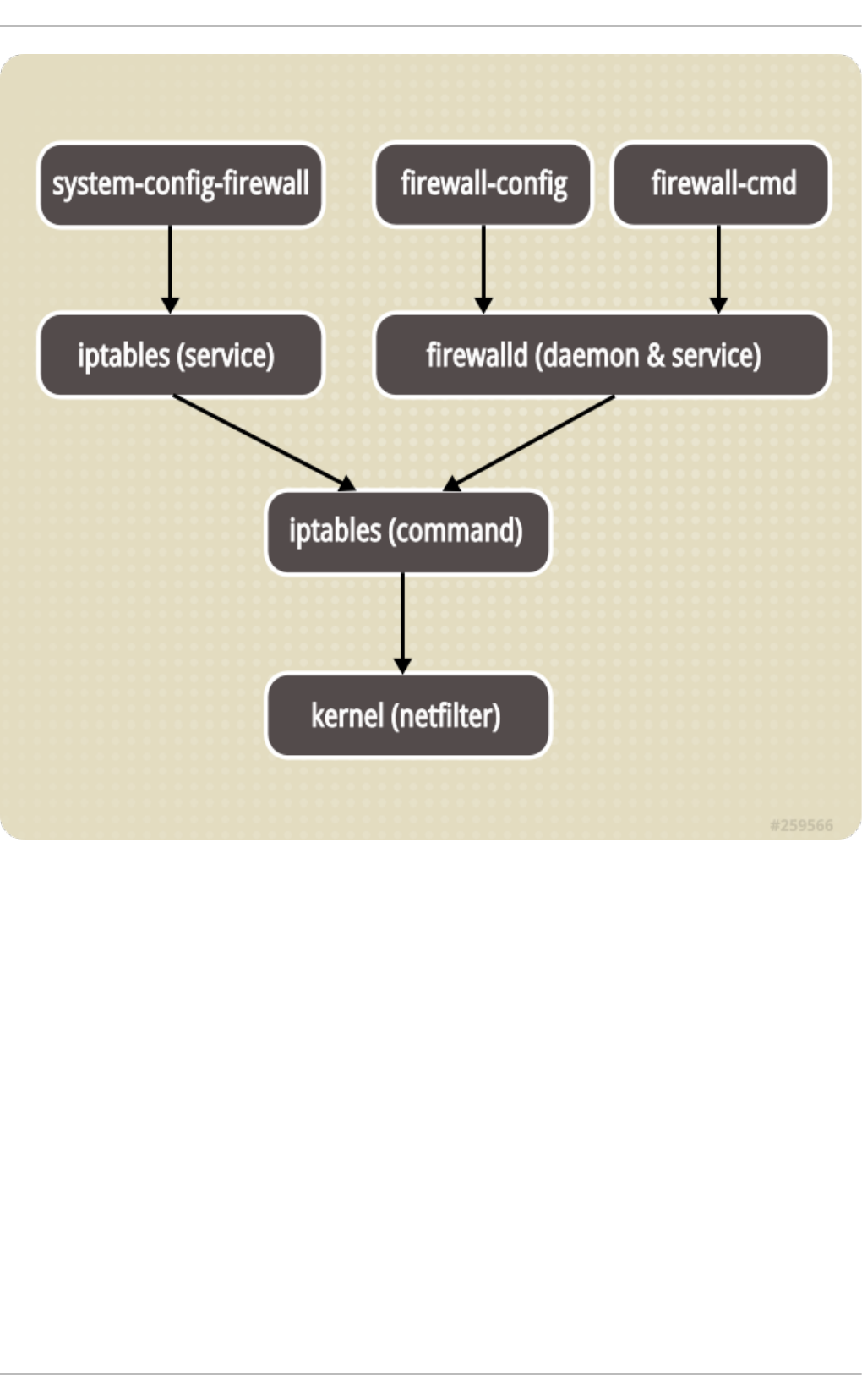

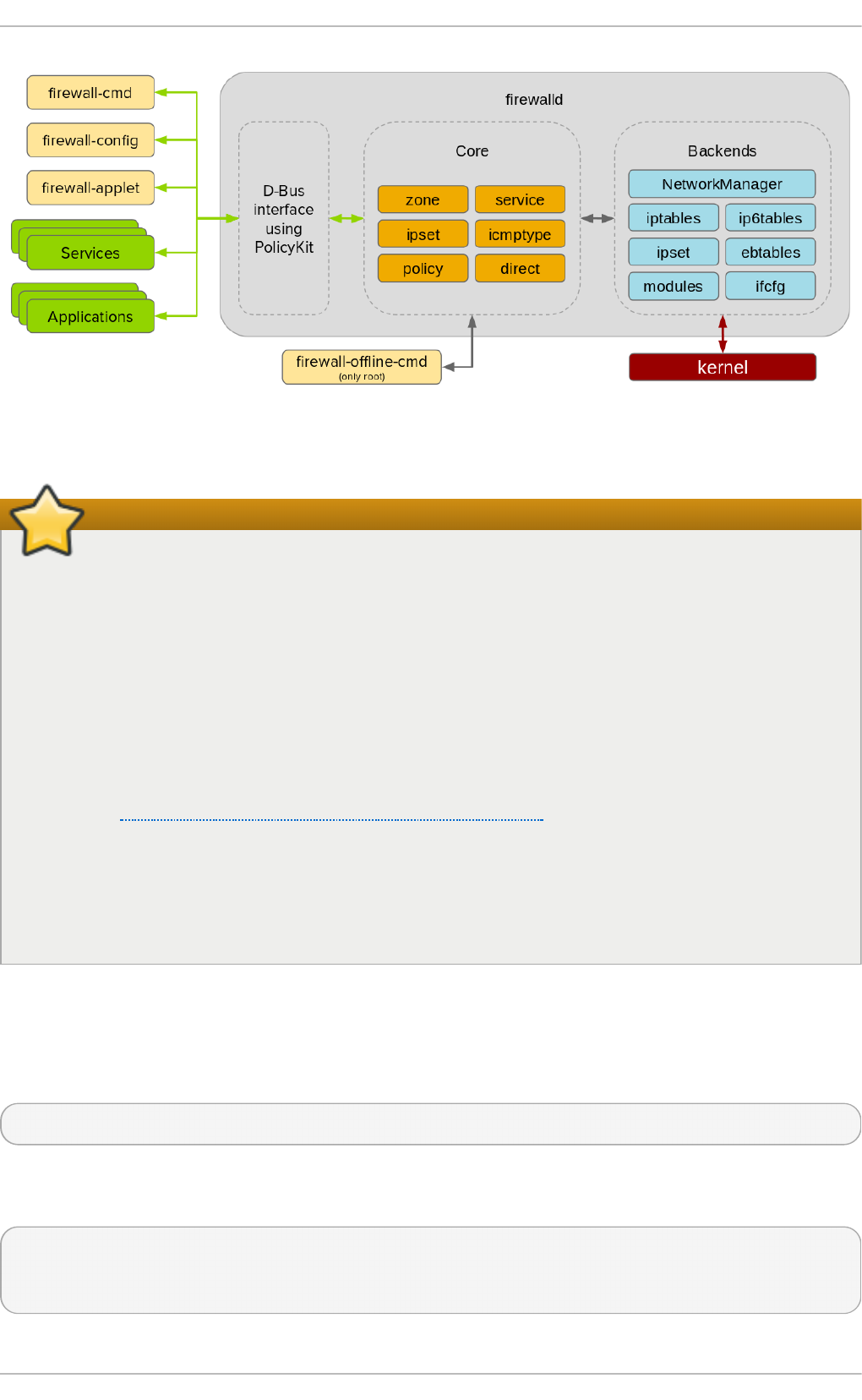

- 4.5.1. Introduction to firewalld

- 4.5.2. Installing firewalld

- 4.5.3. Configuring firewalld

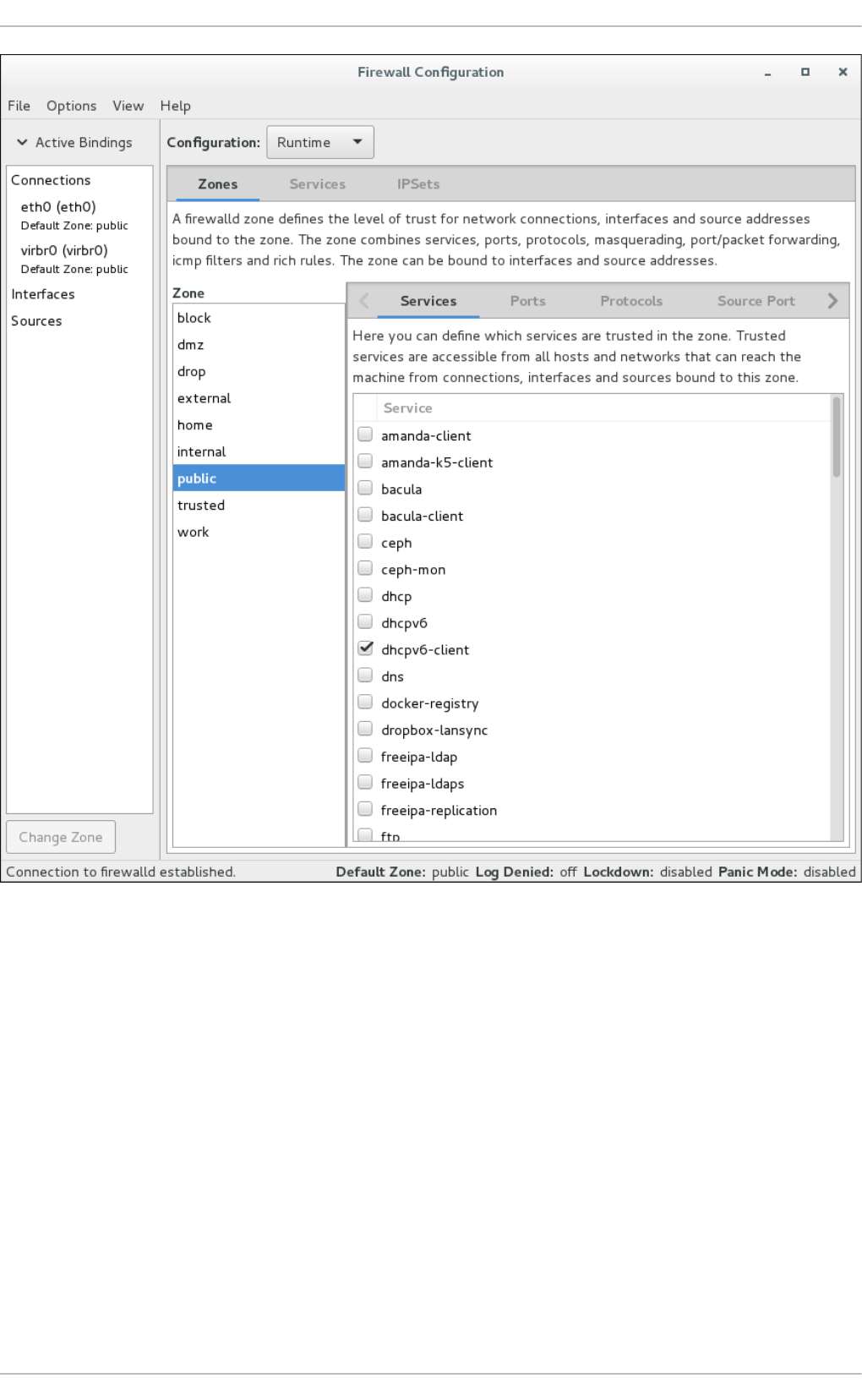

- 4.5.3.1. Configuring firewalld Using The Graphical User Interface

- 4.5.3.2. Configuring the Firewall Using the firewall-cmd Command-Line Tool

- 4.5.3.3. Viewing the Firewall Settings Using the Command-Line Interface (CLI)

- 4.5.3.4. Changing the Firewall Settings Using the Command-Line Interface (CLI)

- 4.5.3.5. Configuring the Firewall Using XML Files

- 4.5.3.6. Using the Direct Interface

- 4.5.3.7. Configuring Complex Firewall Rules with the "Rich Language" Syntax

- 4.5.3.8. Firewall Lockdown

- 4.5.3.9. Configuring Logging for Denied Packets

- 4.5.4. Using the iptables Service

- 4.5.5. Additional Resources

- 4.6. Securing DNS Traffic with DNSSEC

- 4.6.1. Introduction to DNSSEC

- 4.6.2. Understanding DNSSEC

- 4.6.3. Understanding Dnssec-trigger

- 4.6.4. VPN Supplied Domains and Name Servers

- 4.6.5. Recommended Naming Practices

- 4.6.6. Understanding Trust Anchors

- 4.6.7. Installing DNSSEC

- 4.6.8. Using Dnssec-trigger

- 4.6.9. Using dig With DNSSEC

- 4.6.10. Setting up Hotspot Detection Infrastructure for Dnssec-trigger

- 4.6.11. Configuring DNSSEC Validation for Connection Supplied Domains

- 4.6.12. Additional Resources

- 4.7. Securing Virtual Private Networks (VPNs)

- 4.7.1. IPsec VPN Using Libreswan

- 4.7.2. VPN Configurations Using Libreswan

- 4.7.3. Host-To-Host VPN Using Libreswan

- 4.7.4. Site-to-Site VPN Using Libreswan

- 4.7.5. Site-to-Site Single Tunnel VPN Using Libreswan

- 4.7.6. Subnet Extrusion Using Libreswan

- 4.7.7. Road Warrior Application Using Libreswan

- 4.7.8. Road Warrior Application Using Libreswan and XAUTH with X.509

- 4.7.9. Additional Resources

- 4.8. Using OpenSSL

- 4.8.1. Creating and Managing Encryption Keys

- 4.8.2. Generating Certificates

- 4.8.3. Verifying Certificates

- 4.8.4. Encrypting and Decrypting a File

- 4.8.5. Generating Message Digests

- 4.8.6. Generating Password Hashes

- 4.8.7. Generating Random Data

- 4.8.8. Benchmarking Your System

- 4.8.9. Configuring OpenSSL

- 4.9. Using stunnel

- 4.10. Encryption

- 4.10.1. Using LUKS Disk Encryption

- Overview of LUKS

- 4.10.1.1. LUKS Implementation in Red Hat Enterprise Linux

- 4.10.1.2. Manually Encrypting Directories

- 4.10.1.3. Add a New Passphrase to an Existing Device

- 4.10.1.4. Remove a Passphrase from an Existing Device

- 4.10.1.5. Creating Encrypted Block Devices in Anaconda

- 4.10.1.6. Additional Resources

- 4.10.2. Creating GPG Keys

- 4.10.3. Using openCryptoki for Public-Key Cryptography

- 4.10.4. Using Smart Cards to Supply Credentials to OpenSSH

- 4.10.5. Trusted and Encrypted Keys

- 4.10.6. Using the Random Number Generator

- 4.10.1. Using LUKS Disk Encryption

- 4.11. Hardening TLS Configuration

- 4.12. Using MACsec (IEEE 802.1AE)

- Chapter 5. System Auditing

- Use Cases

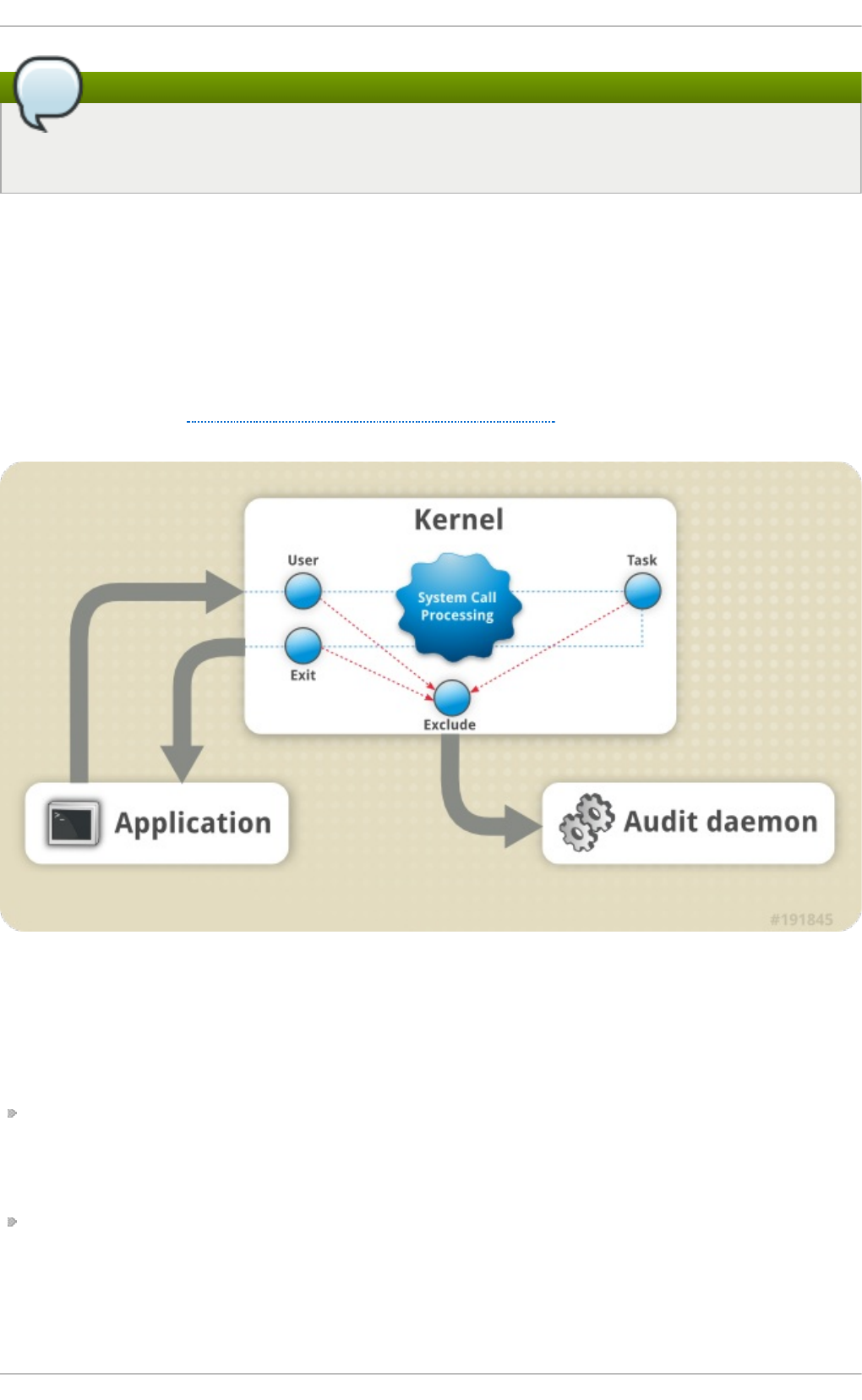

- 5.1. Audit System Architecture

- 5.2. Installing the audit Packages

- 5.3. Configuring the audit Service

- 5.4. Starting the audit Service

- 5.5. Defining Audit Rules

- 5.5.1. Defining Audit Rules with auditctl

- Defining Control Rules

- Defining File System Rules

- Defining System Call Rules

- 5.5.2. Defining Executable File Rules

- 5.5.3. Defining Persistent Audit Rules and Controls in the /etc/audit/audit.rules File

- Defining Control Rules

- Defining File System and System Call Rules

- Preconfigured Rules Files

- 5.6. Understanding Audit Log Files

- 5.7. Searching the Audit Log Files

- 5.8. Creating Audit Reports

- 5.9. Additional Resources

- Chapter 6. Compliance and Vulnerability Scanning with OpenSCAP

- 6.1. Security Compliance in Red Hat Enterprise Linux

- 6.2. Defining Compliance Policy

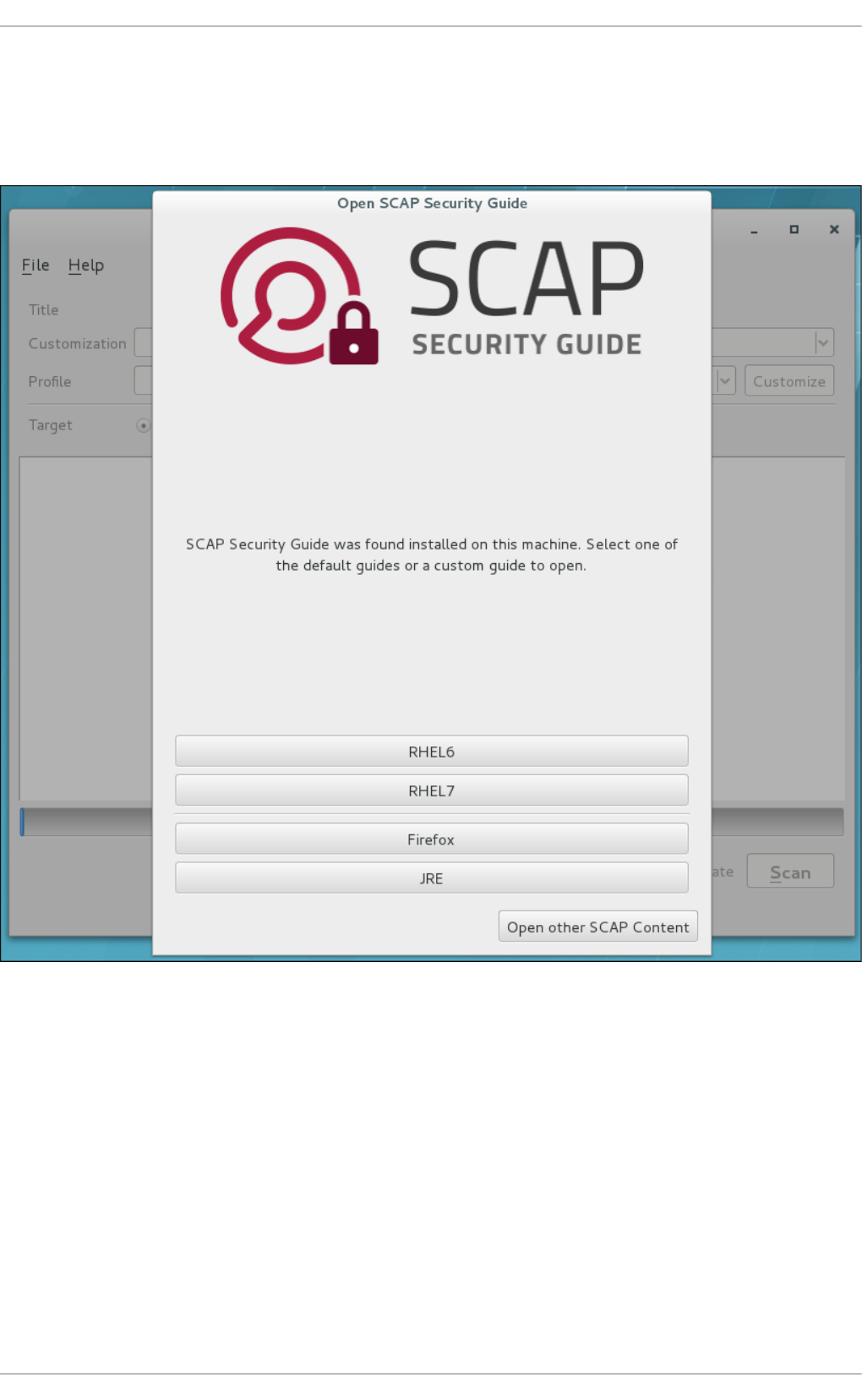

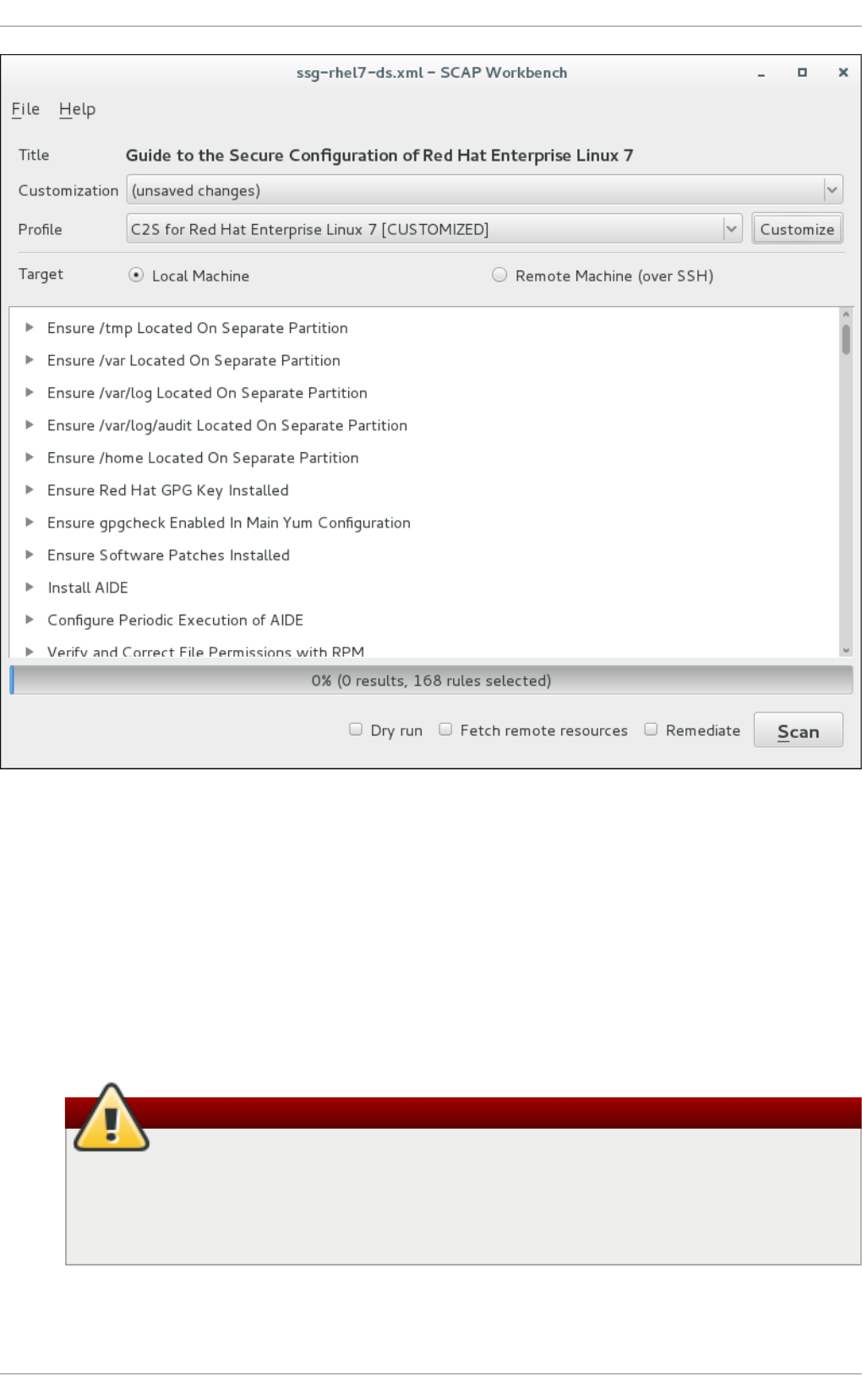

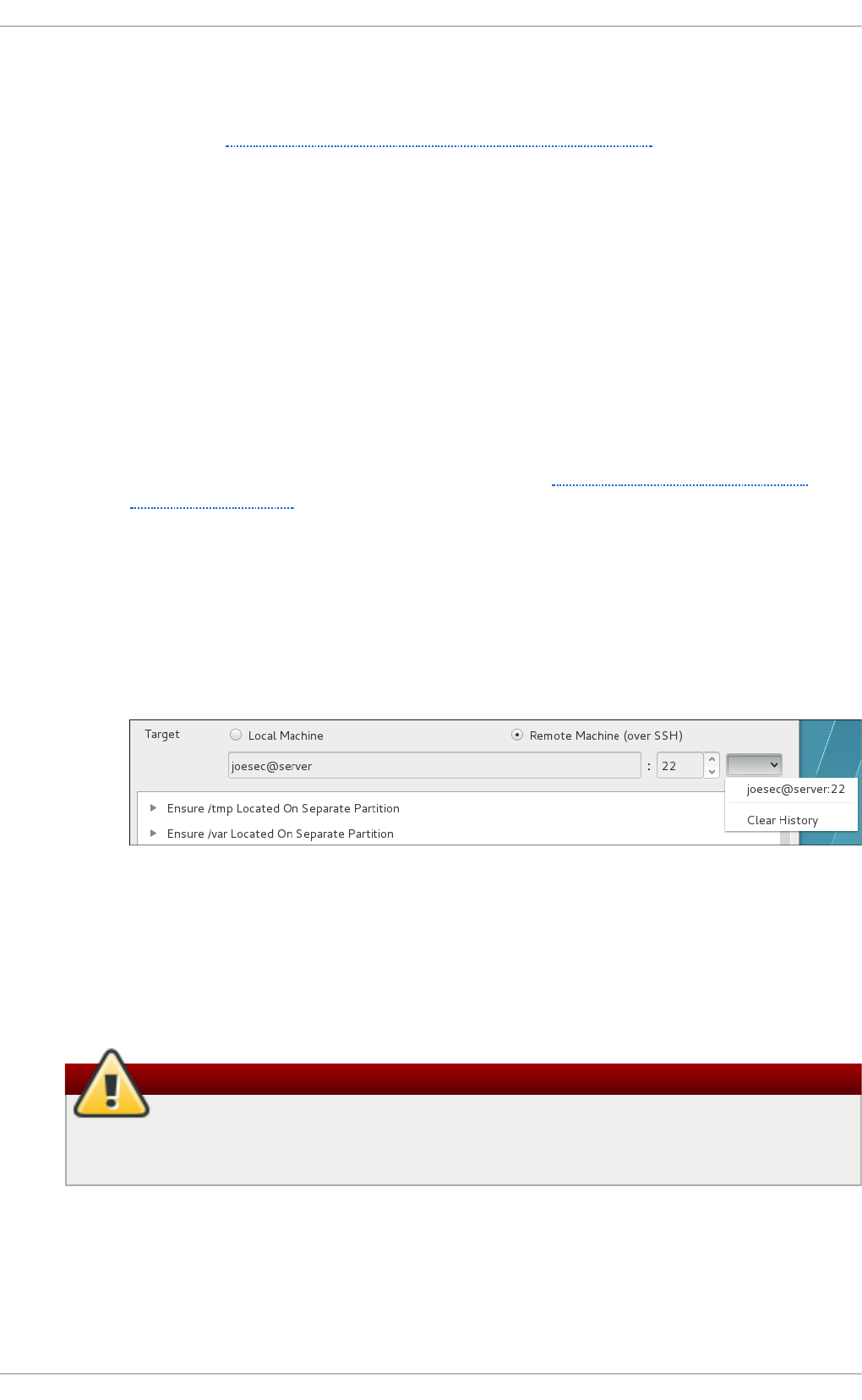

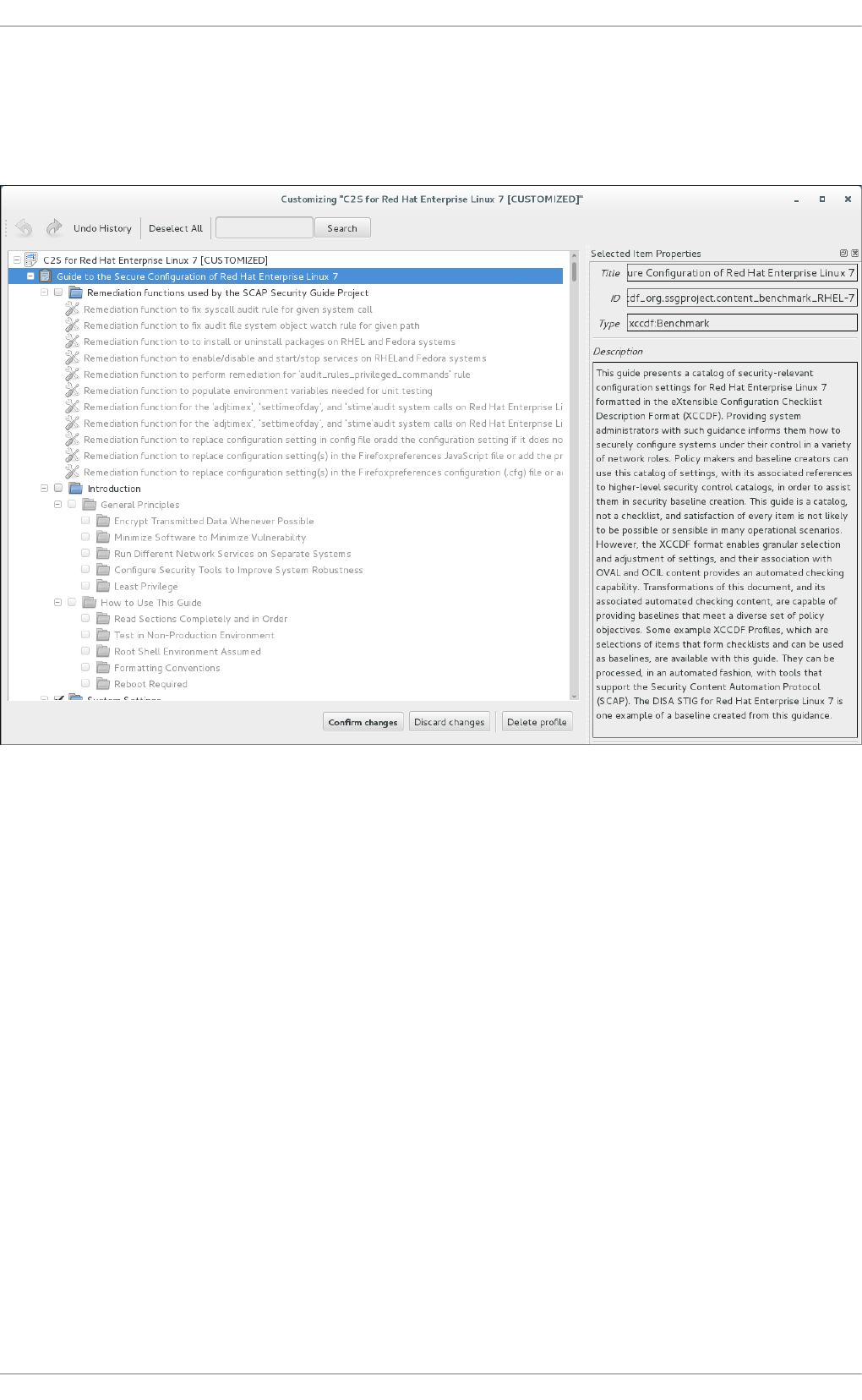

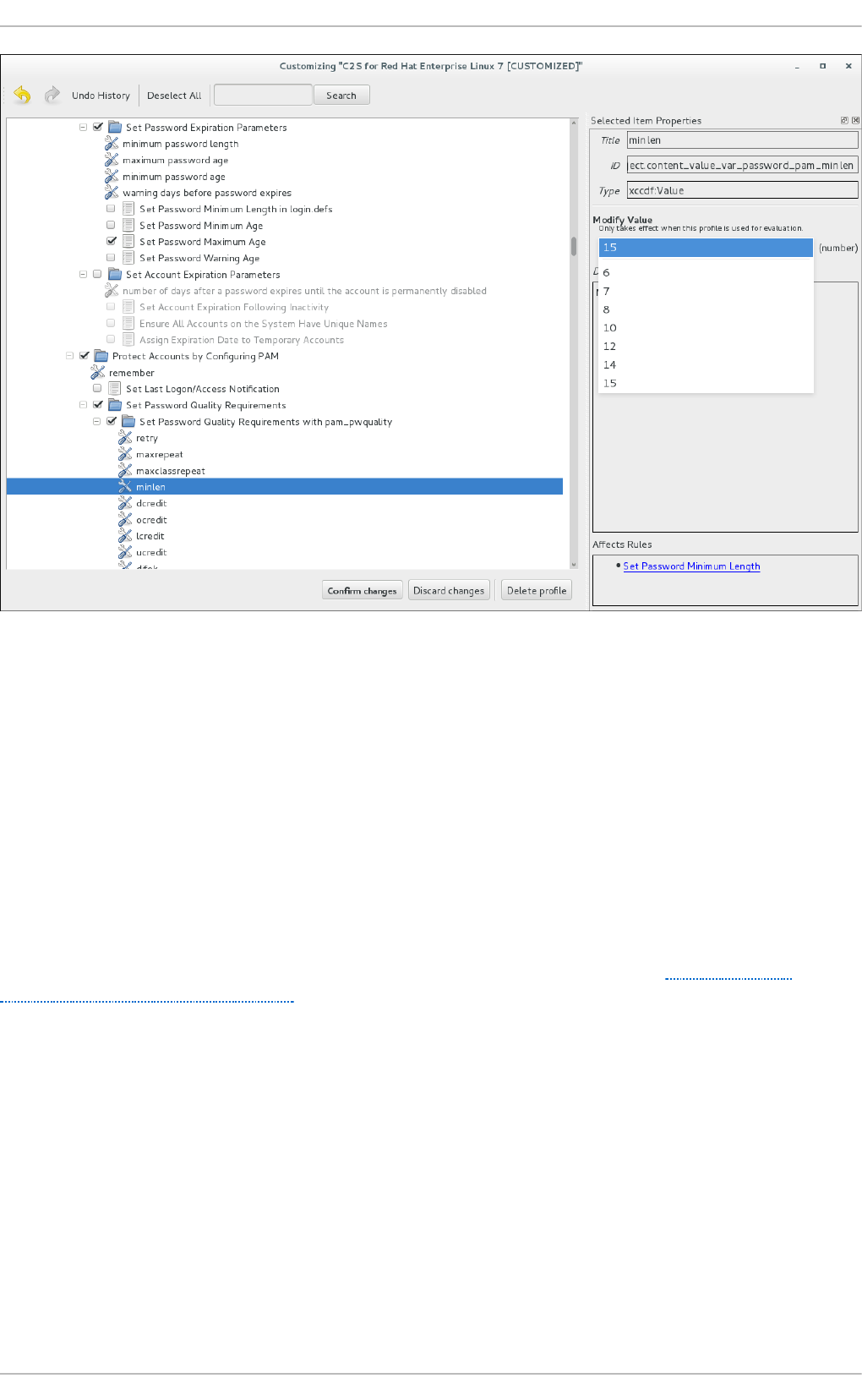

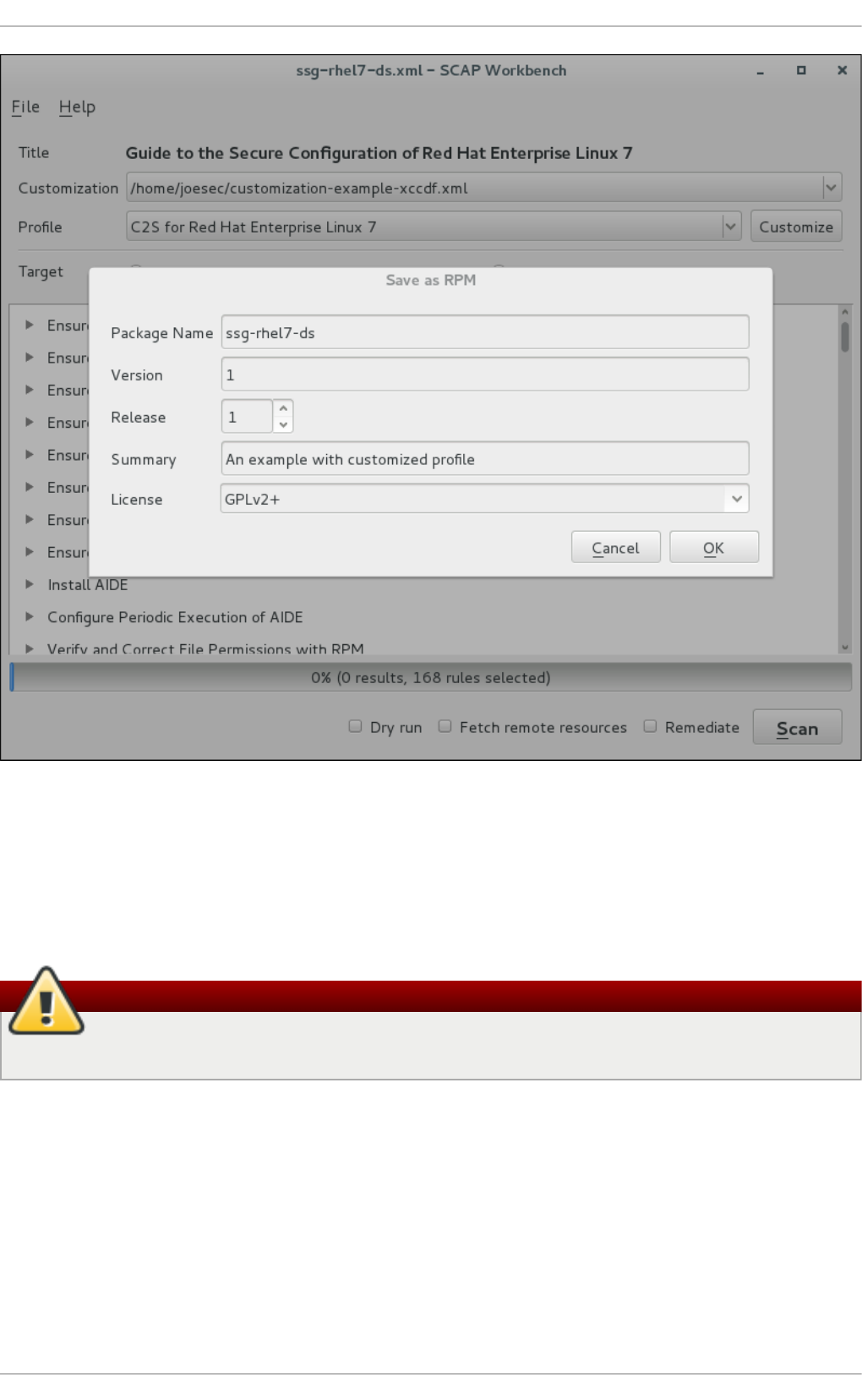

- 6.3. Using SCAP Workbench

- 6.4. Using oscap

- 6.5. Using OpenSCAP with Docker

- 6.6. Using OpenSCAP with Atomic

- 6.7. Using OpenSCAP with Red Hat Satellite

- 6.8. Practical Examples

- 6.9. Additional Resources

- Chapter 7. Federal Standards and Regulations

- Appendix A. Encryption Standards

- Appendix B. Audit System Reference

- Appendix C. Revision History

Mirek Jahoda Robert Krátký Martin Prpič

Tomáš Čapek Stephen Wadeley Yoana Ruseva

Miroslav Svoboda

Red Hat Enterprise Linux 7

Security Guide

A Guide to Securing Red Hat Enterprise Linux 7

Red Hat Enterprise Linux 7 Security Guide

A Guide to Securing Red Hat Enterprise Linux 7

Mirek Jahoda

Red Hat Customer Content Services

mjahoda@redhat.com

Robert Krátký

Red Hat Customer Content Services

Martin Prpič

Red Hat Customer Content Services

Tomáš Čapek

Red Hat Customer Content Services

Stephen Wadeley

Red Hat Customer Content Services

Yoana Ruseva

Red Hat Customer Content Services

Miroslav Svoboda

Red Hat Customer Content Services

Legal Notice

Copyright © 2017 Red Hat, Inc.

This document is licensed by Red Hat under the Creative Commons Attribution-

ShareAlike 3.0 Unported License. If you distribute this document, or a modified version

of it, you must provide attribution to Red Hat, Inc. and provide a link to the original. If

the document is modified, all Red Hat trademarks must be removed.

Red Hat, as the licensor of this document, waives the right to enforce, and agrees

not to assert, Section 4d of CC-BY-SA to the fullest extent permitted by applicable

law.

Red Hat, Red Hat Enterprise Linux, the Shadowman logo, JBoss, OpenShift, Fedora,

the Infinity logo, and RHCE are trademarks of Red Hat, Inc., registered in the United

States and other countries.

Linux ® is the registered trademark of Linus Torvalds in the United States and other

countries.

Java ® is a registered trademark of Oracle and/or its affiliates.

XFS ® is a trademark of Silicon Graphics International Corp. or its subsidiaries in the

United States and/or other countries.

MySQL ® is a registered trademark of MySQL AB in the United States, the European

Union and other countries.

Node.js ® is an official trademark of Joyent. Red Hat Software Collections is not

formally related to or endorsed by the official Joyent Node.js open source or

commercial project.

The OpenStack ® Word Mark and OpenStack logo are either registered

trademarks/service marks or trademarks/service marks of the OpenStack

Foundation, in the United States and other countries and are used with the

OpenStack Foundation's permission. We are not affiliated with, endorsed or

sponsored by the OpenStack Foundation, or the OpenStack community.

All other trademarks are the property of their respective owners.

Abstract

This book assists users and administrators in learning the processes and practices of

securing workstations and servers against local and remote intrusion, exploitation,

and malicious activity. Focused on Red Hat Enterprise Linux but detailing concepts

and techniques valid for all Linux systems, this guide details the planning and the

tools involved in creating a secured computing environment for the data center,

workplace, and home. With proper administrative knowledge, vigilance, and tools,

systems running Linux can be both fully functional and secured from most common

intrusion and exploit methods.

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Table of Contents

Chapte r 1. O verview of Security T opics

1.1. What is Computer Security?

1.2. Security Controls

1.3. Vulnerability Assessment

1.4. Security Threats

1.5. Common Exploits and Attacks

Chapte r 2. Security T ips f or Installation

2.1. Securing BIOS

2.2. Partitioning the Disk

2.3. Installing the Minim um Amount of Packages Required

2.4. Restricting Network Connectivity During the Installation Process

2.5. Post-installation Procedures

2.6. Additional Resources

Chapte r 3. Kee ping Your System Up-to-Dat e

3.1. Maintaining Installed Software

3.2. Using the Red Hat Custom er Portal

3.3. Additional Resources

Chapte r 4. Hardening Your System wit h T ools and Services

4.1. Desktop Security

4.2. Controlling Root Access

4.3. Securing Services

4.4. Securing Network Access

4.5. Using Firewalls

4.6. Securing DNS Traffic with DNSSEC

4.7. Securing Virtual Private Networks (VPNs)

4.8. Using OpenSSL

4.9. Using stunnel

4.10. Encryption

4.11. Hardening TLS Configuration

4.12. Using MACsec (IEEE 802.1AE)

Chapte r 5. System Auditing

Use Cases

5.1. Audit System Architecture

5.2. Installing the audit Packages

5.3. Configuring the audit Service

5.4. Starting the audit Service

5.5. Defining Audit Rules

5.6. Understanding Audit Log Files

5.7. Searching the Audit Log Files

5.8. Creating Audit Reports

5.9. Additional Resources

Chapte r 6. Compliance and Vulne rability Scanning with O penSCAP

6.1. Security Compliance in Red Hat Enterprise Linux

6.2. Defining Compliance Policy

6.3. Using SCAP Workbench

6.4. Using oscap

6.5. Using OpenSCAP with Docker

6.6. Using OpenSCAP with Atomic

3

3

4

5

9

12

17

17

17

18

19

19

19

21

21

25

26

28

28

37

44

64

70

114

123

134

140

142

158

167

168

169

170

171

171

172

173

179

184

184

185

187

187

187

196

203

211

212

T able of Contents

1

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

6.7. Using OpenSCAP with Red Hat Satellite

6.8. Practical Examples

6.9. Additional Resources

Chapte r 7. Federal Standards and Regulations

7.1. Federal Information Processing Standard (FIPS)

7.2. National Industrial Security Program Operating Manual (NISPOM)

7.3. Paym ent Card Industry Data Security Standard (PCI DSS)

7.4. Security Technical Im plementation Guide

Appe ndix A. Encryption Standards

A.1. Synchronous Encryption

A.2. Public-key Encryption

Appe ndix B. Audit System Ref e rence

B.1. Audit Event Fields

B.2. Audit Record Types

Appe ndix C. Revision History

214

215

216

218

218

220

220

220

221

221

222

225

225

228

234

Securit y Guide

2

Chapter 1. Overview of Security Topics

Due to the increased reliance on powerful, networked computers to help run businesses

and keep track of our personal information, entire industries have been formed around

the practice of network and computer security. Enterprises have solicited the knowledge

and skills of security experts to properly audit systems and tailor solutions to fit the

operating requirements of their organization. Because most organizations are increasingly

dynamic in nature, their workers are accessing critical company IT resources locally and

remotely, hence the need for secure computing environments has become more

pronounced.

Unfortunately, many organizations (as well as individual users) regard security as more of

an afterthought, a process that is overlooked in favor of increased power, productivity,

convenience, ease of use, and budgetary concerns. Proper security implementation is

often enacted postmortem — after an unauthorized intrusion has already occurred. Taking

the correct measures prior to connecting a site to an untrusted network, such as the

Internet, is an effective means of thwarting many attempts at intrusion.

Note

This document makes several references to files in the /lib directory. When using

64-bit systems, some of the files mentioned may instead be located in /lib64.

1.1. What is Computer Security?

Computer security is a general term that covers a wide area of computing and information

processing. Industries that depend on computer systems and networks to conduct daily

business transactions and access critical information regard their data as an important

part of their overall assets. Several terms and metrics have entered our daily business

vocabulary, such as total cost of ownership (TCO), return on investment (ROI), and quality

of service (QoS). Using these metrics, industries can calculate aspects such as data

integrity and high-availability (HA) as part of their planning and process management

costs. In some industries, such as electronic commerce, the availability and

trustworthiness of data can mean the difference between success and failure.

1.1.1. Standardizing Securit y

Enterprises in every industry rely on regulations and rules that are set by standards-

making bodies such as the American Medical Association (AMA) or the Institute of Electrical

and Electronics Engineers (IEEE). The same ideals hold true for information security. Many

security consultants and vendors agree upon the standard security model known as CIA,

or Confidentiality, Integrity, and Availability. This three-tiered model is a generally

accepted component to assessing risks of sensitive information and establishing security

policy. The following describes the CIA model in further detail:

Confidentiality — Sensitive information must be available only to a set of pre-defined

individuals. Unauthorized transmission and usage of information should be restricted.

For example, confidentiality of information ensures that a customer's personal or

financial information is not obtained by an unauthorized individual for malicious

purposes such as identity theft or credit fraud.

Chapte r 1. O verview of Security T opics

3

Integrity — Information should not be altered in ways that render it incomplete or

incorrect. Unauthorized users should be restricted from the ability to modify or destroy

sensitive information.

Availability — Information should be accessible to authorized users any time that it is

needed. Availability is a warranty that information can be obtained with an agreed-upon

frequency and timeliness. This is often measured in terms of percentages and agreed

to formally in Service Level Agreements (SLAs) used by network service providers and

their enterprise clients.

1.2. Security Controls

Computer security is often divided into three distinct master categories, commonly

referred to as controls:

Physical

Technical

Administrative

These three broad categories define the main objectives of proper security

implementation. Within these controls are sub-categories that further detail the controls

and how to implement them.

1.2.1. Physical Controls

Physical control is the implementation of security measures in a defined structure used to

deter or prevent unauthorized access to sensitive material. Examples of physical controls

are:

Closed-circuit surveillance cameras

Motion or thermal alarm systems

Security guards

Picture IDs

Locked and dead-bolted steel doors

Biometrics (includes fingerprint, voice, face, iris, handwriting, and other automated

methods used to recognize individuals)

1.2.2. T echnical Controls

Technical controls use technology as a basis for controlling the access and usage of

sensitive data throughout a physical structure and over a network. Technical controls are

far-reaching in scope and encompass such technologies as:

Encryption

Smart cards

Network authentication

Access control lists (ACLs)

Securit y Guide

4

File integrity auditing software

1.2.3. Administrat ive Controls

Administrative controls define the human factors of security. They involve all levels of

personnel within an organization and determine which users have access to what

resources and information by such means as:

Training and awareness

Disaster preparedness and recovery plans

Personnel recruitment and separation strategies

Personnel registration and accounting

1.3. Vulnerability Assessment

Given time, resources, and motivation, an attacker can break into nearly any system. All of

the security procedures and technologies currently available cannot guarantee that any

systems are completely safe from intrusion. Routers help secure gateways to the

Internet. Firewalls help secure the edge of the network. Virtual Private Networks safely

pass data in an encrypted stream. Intrusion detection systems warn you of malicious

activity. However, the success of each of these technologies is dependent upon a number

of variables, including:

The expertise of the staff responsible for configuring, monitoring, and maintaining the

technologies.

The ability to patch and update services and kernels quickly and efficiently.

The ability of those responsible to keep constant vigilance over the network.

Given the dynamic state of data systems and technologies, securing corporate resources

can be quite complex. Due to this complexity, it is often difficult to find expert resources

for all of your systems. While it is possible to have personnel knowledgeable in many

areas of information security at a high level, it is difficult to retain staff who are experts in

more than a few subject areas. This is mainly because each subject area of information

security requires constant attention and focus. Information security does not stand still.

A vulnerability assessment is an internal audit of your network and system security; the

results of which indicate the confidentiality, integrity, and availability of your network (as

explained in Section 1.1.1, “Standardizing Security”). Typically, vulnerability assessment

starts with a reconnaissance phase, during which important data regarding the target

systems and resources is gathered. This phase leads to the system readiness phase,

whereby the target is essentially checked for all known vulnerabilities. The readiness

phase culminates in the reporting phase, where the findings are classified into categories

of high, medium, and low risk; and methods for improving the security (or mitigating the

risk of vulnerability) of the target are discussed

If you were to perform a vulnerability assessment of your home, you would likely check

each door to your home to see if they are closed and locked. You would also check every

window, making sure that they closed completely and latch correctly. This same concept

applies to systems, networks, and electronic data. Malicious users are the thieves and

vandals of your data. Focus on their tools, mentality, and motivations, and you can then

react swiftly to their actions.

1.3.1. Defining Assessment and T est ing

Chapte r 1. O verview of Security T opics

5

1.3.1. Defining Assessment and T est ing

Vulnerability assessments may be broken down into one of two types: outside looking in

and inside looking around.

When performing an outside-looking-in vulnerability assessment, you are attempting to

compromise your systems from the outside. Being external to your company provides

you with the cracker's viewpoint. You see what a cracker sees — publicly-routable IP

addresses, systems on your DMZ, external interfaces of your firewall, and more. DMZ

stands for "demilitarized zone", which corresponds to a computer or small subnetwork that

sits between a trusted internal network, such as a corporate private LAN, and an untrusted

external network, such as the public Internet. Typically, the DMZ contains devices

accessible to Internet traffic, such as Web (HTTP) servers, FTP servers, SMTP (e-mail)

servers and DNS servers.

When you perform an inside-looking-around vulnerability assessment, you are at an

advantage since you are internal and your status is elevated to trusted. This is the

viewpoint you and your co-workers have once logged on to your systems. You see print

servers, file servers, databases, and other resources.

There are striking distinctions between the two types of vulnerability assessments. Being

internal to your company gives you more privileges than an outsider. In most

organizations, security is configured to keep intruders out. Very little is done to secure

the internals of the organization (such as departmental firewalls, user-level access

controls, and authentication procedures for internal resources). Typically, there are many

more resources when looking around inside as most systems are internal to a company.

Once you are outside the company, your status is untrusted. The systems and resources

available to you externally are usually very limited.

Consider the difference between vulnerability assessments and penetration tests. Think of

a vulnerability assessment as the first step to a penetration test. The information gleaned

from the assessment is used for testing. Whereas the assessment is undertaken to

check for holes and potential vulnerabilities, the penetration testing actually attempts to

exploit the findings.

Assessing network infrastructure is a dynamic process. Security, both information and

physical, is dynamic. Performing an assessment shows an overview, which can turn up

false positives and false negatives. A false positive is a result, where the tool finds

vulnerabilities which in reality do not exist. A false negative is when it omits actual

vulnerabilities.

Security administrators are only as good as the tools they use and the knowledge they

retain. Take any of the assessment tools currently available, run them against your

system, and it is almost a guarantee that there are some false positives. Whether by

program fault or user error, the result is the same. The tool may find false positives, or,

even worse, false negatives.

Now that the difference between a vulnerability assessment and a penetration test is

defined, take the findings of the assessment and review them carefully before conducting

a penetration test as part of your new best practices approach.

Warning

Do not attempt to exploit vulnerabilities on production systems. Doing so can have

adverse effects on productivity and efficiency of your systems and network.

Securit y Guide

6

The following list examines some of the benefits to performing vulnerability assessments.

Creates proactive focus on information security.

Finds potential exploits before crackers find them.

Results in systems being kept up to date and patched.

Promotes growth and aids in developing staff expertise.

Abates financial loss and negative publicity.

1.3.2. Establishing a Methodology for Vulnerability Assessment

To aid in the selection of tools for a vulnerability assessment, it is helpful to establish a

vulnerability assessment methodology. Unfortunately, there is no predefined or industry

approved methodology at this time; however, common sense and best practices can act

as a sufficient guide.

What is the target? Are we looking at one server, or are we looking at our entire network

and everything within the network? Are we external or internal to the company? The

answers to these questions are important as they help determine not only which tools to

select but also the manner in which they are used.

To learn more about establishing methodologies, see the following website:

https://www.owasp.org/ — The Open Web Application Security Project

1.3.3. Vulnerability Assessment T ools

An assessment can start by using some form of an information-gathering tool. When

assessing the entire network, map the layout first to find the hosts that are running. Once

located, examine each host individually. Focusing on these hosts requires another set of

tools. Knowing which tools to use may be the most crucial step in finding vulnerabilities.

Just as in any aspect of everyday life, there are many different tools that perform the

same job. This concept applies to performing vulnerability assessments as well. There

are tools specific to operating systems, applications, and even networks (based on the

protocols used). Some tools are free; others are not. Some tools are intuitive and easy to

use, while others are cryptic and poorly documented but have features that other tools do

not.

Finding the right tools may be a daunting task and, in the end, experience counts. If

possible, set up a test lab and try out as many tools as you can, noting the strengths and

weaknesses of each. Review the README file or man page for the tools. Additionally, look

to the Internet for more information, such as articles, step-by-step guides, or even mailing

lists specific to the tools.

The tools discussed below are just a small sampling of the available tools.

1.3.3.1. Scanning Hosts with Nmap

Nmap is a popular tool that can be used to determine the layout of a network. Nmap has

been available for many years and is probably the most often used tool when gathering

information. An excellent manual page is included that provides detailed descriptions of its

options and usage. Administrators can use Nmap on a network to find host systems and

open ports on those systems.

Chapte r 1. O verview of Security T opics

7

Nmap is a competent first step in vulnerability assessment. You can map out all the hosts

within your network and even pass an option that allows Nmap to attempt to identify the

operating system running on a particular host. Nmap is a good foundation for establishing

a policy of using secure services and restricting unused services.

To install Nmap, run the yum install nmap command as the root user.

1.3.3.1.1. Using Nmap

Nmap can be run from a shell prompt by typing the nmap command followed by the host

name or IP address of the machine to scan:

nmap <hostname>

For example, to scan a machine with host name foo.example.com, type the following at a

shell prompt:

~]$ nmap foo.example.com

The results of a basic scan (which could take up to a few minutes, depending on where the

host is located and other network conditions) look similar to the following:

Interesting ports on foo.example.com:

Not shown: 1710 filtered ports

PORT STATE SERVICE

22/tcp open ssh

53/tcp open domain

80/tcp open http

113/tcp closed auth

Nmap tests the most common network communication ports for listening or waiting

services. This knowledge can be helpful to an administrator who wants to close

unnecessary or unused services.

For more information about using Nmap, see the official homepage at the following URL:

http://www.insecure.org/

1.3.3.2. Nessus

Nessus is a full-service security scanner. The plug-in architecture of Nessus allows users

to customize it for their systems and networks. As with any scanner, Nessus is only as

good as the signature database it relies upon. Fortunately, Nessus is frequently updated

and features full reporting, host scanning, and real-time vulnerability searches. Remember

that there could be false positives and false negatives, even in a tool as powerful and as

frequently updated as Nessus.

Note

The Nessus client and server software requires a subscription to use. It has been

included in this document as a reference to users who may be interested in using

this popular application.

Securit y Guide

8

For more information about Nessus, see the official website at the following URL:

http://www.nessus.org/

1.3.3.3. OpenVAS

OpenVAS (Open Vulnerability Assessment System) is a set of tools and services that can

be used to scan for vulnerabilities and for a comprehensive vulnerability management.

The OpenVAS framework offers a number of web-based, desktop, and command line

tools for controlling the various components of the solution. The core functionality of

OpenVAS is provided by a security scanner, which makes use of over 33 thousand daily-

updated Network Vulnerability Tests (NVT). Unlike Nessus (see Section 1.3.3.2, “Nessus”),

OpenVAS does not require any subscription.

For more information about OpenVAS, see the official website at the following URL:

http://www.openvas.org/

1.3.3.4. Nikto

Nikt o is an excellent common gateway interface (CGI) script scanner. Nikt o not only

checks for CGI vulnerabilities but does so in an evasive manner, so as to elude intrusion-

detection systems. It comes with thorough documentation which should be carefully

reviewed prior to running the program. If you have web servers serving CGI scripts,

Nikt o can be an excellent resource for checking the security of these servers.

More information about Nikt o can be found at the following URL:

http://cirt.net/nikto2

1.4. Security Threats

1.4.1. T hreat s to Net work Securit y

Bad practices when configuring the following aspects of a network can increase the risk of

an attack.

Insecure Architectures

A misconfigured network is a primary entry point for unauthorized users. Leaving a trust-

based, open local network vulnerable to the highly-insecure Internet is much like leaving a

door ajar in a crime-ridden neighborhood — nothing may happen for an arbitrary amount of

time, but someone exploits the opportunity eventually.

Broadcast Networks

System administrators often fail to realize the importance of networking hardware in their

security schemes. Simple hardware, such as hubs and routers, relies on the broadcast or

non-switched principle; that is, whenever a node transmits data across the network to a

recipient node, the hub or router sends a broadcast of the data packets until the recipient

node receives and processes the data. This method is the most vulnerable to address

resolution protocol (ARP) or media access control (MAC) address spoofing by both outside

intruders and unauthorized users on local hosts.

Centralized Servers

Chapte r 1. O verview of Security T opics

9

Another potential networking pitfall is the use of centralized computing. A common cost-

cutting measure for many businesses is to consolidate all services to a single powerful

machine. This can be convenient as it is easier to manage and costs considerably less

than multiple-server configurations. However, a centralized server introduces a single

point of failure on the network. If the central server is compromised, it may render the

network completely useless or worse, prone to data manipulation or theft. In these

situations, a central server becomes an open door that allows access to the entire

network.

1.4.2. T hreat s to Server Security

Server security is as important as network security because servers often hold a great

deal of an organization's vital information. If a server is compromised, all of its contents

may become available for the cracker to steal or manipulate at will. The following sections

detail some of the main issues.

Unused Services and Open Ports

A full installation of Red Hat Enterprise Linux 7 contains more than 1000 application and

library packages. However, most server administrators do not opt to install every single

package in the distribution, preferring instead to install a base installation of packages,

including several server applications. See Section 2.3, “Installing the Minimum Amount of

Packages Required” for an explanation of the reasons to limit the number of installed

packages and for additional resources.

A common occurrence among system administrators is to install the operating system

without paying attention to what programs are actually being installed. This can be

problematic because unneeded services may be installed, configured with the default

settings, and possibly turned on. This can cause unwanted services, such as Telnet, DHCP,

or DNS, to run on a server or workstation without the administrator realizing it, which in

turn can cause unwanted traffic to the server or even a potential pathway into the system

for crackers. See Section 4.3, “Securing Services” for information on closing ports and

disabling unused services.

Unpatched Services

Most server applications that are included in a default installation are solid, thoroughly

tested pieces of software. Having been in use in production environments for many years,

their code has been thoroughly refined and many of the bugs have been found and fixed.

However, there is no such thing as perfect software and there is always room for further

refinement. Moreover, newer software is often not as rigorously tested as one might

expect, because of its recent arrival to production environments or because it may not be

as popular as other server software.

Developers and system administrators often find exploitable bugs in server applications

and publish the information on bug tracking and security-related websites such as the

Bugtraq mailing list (http://www.securityfocus.com) or the Computer Emergency Response

Team (CERT) website (http://www.cert.org). Although these mechanisms are an effective

way of alerting the community to security vulnerabilities, it is up to system administrators

to patch their systems promptly. This is particularly true because crackers have access to

these same vulnerability tracking services and will use the information to crack unpatched

systems whenever they can. Good system administration requires vigilance, constant bug

tracking, and proper system maintenance to ensure a more secure computing

environment.

Securit y Guide

10

See Chapter 3, Keeping Your System Up-to-Date for more information about keeping a

system up-to-date.

Inattentive Administration

Administrators who fail to patch their systems are one of the greatest threats to server

security. According to the SysAdmin, Audit, Network, Security Institute (SANS), the primary

cause of computer security vulnerability is "assigning untrained people to maintain

security and providing neither the training nor the time to make it possible to learn and do

the job." This applies as much to inexperienced administrators as it does to

overconfident or amotivated administrators.

Some administrators fail to patch their servers and workstations, while others fail to watch

log messages from the system kernel or network traffic. Another common error is when

default passwords or keys to services are left unchanged. For example, some databases

have default administration passwords because the database developers assume that

the system administrator changes these passwords immediately after installation. If a

database administrator fails to change this password, even an inexperienced cracker can

use a widely-known default password to gain administrative privileges to the database.

These are only a few examples of how inattentive administration can lead to

compromised servers.

Inherently Insecure Services

Even the most vigilant organization can fall victim to vulnerabilities if the network services

they choose are inherently insecure. For instance, there are many services developed

under the assumption that they are used over trusted networks; however, this

assumption fails as soon as the service becomes available over the Internet — which is

itself inherently untrusted.

One category of insecure network services are those that require unencrypted

usernames and passwords for authentication. Telnet and FTP are two such services. If

packet sniffing software is monitoring traffic between the remote user and such a service

usernames and passwords can be easily intercepted.

Inherently, such services can also more easily fall prey to what the security industry

terms the man-in-the-middle attack. In this type of attack, a cracker redirects network

traffic by tricking a cracked name server on the network to point to his machine instead of

the intended server. Once someone opens a remote session to the server, the attacker's

machine acts as an invisible conduit, sitting quietly between the remote service and the

unsuspecting user capturing information. In this way a cracker can gather administrative

passwords and raw data without the server or the user realizing it.

Another category of insecure services include network file systems and information

services such as NFS or NIS, which are developed explicitly for LAN usage but are,

unfortunately, extended to include WANs (for remote users). NFS does not, by default,

have any authentication or security mechanisms configured to prevent a cracker from

mounting the NFS share and accessing anything contained therein. NIS, as well, has vital

information that must be known by every computer on a network, including passwords and

file permissions, within a plain text ASCII or DBM (ASCII-derived) database. A cracker who

gains access to this database can then access every user account on a network, including

the administrator's account.

By default, Red Hat Enterprise Linux 7 is released with all such services turned off.

However, since administrators often find themselves forced to use these services,

careful configuration is critical. See Section 4.3, “Securing Services” for more information

about setting up services in a safe manner.

[1]

Chapte r 1. O verview of Security T opics

11

1.4.3. T hreat s to Workst ation and Home PC Securit y

Workstations and home PCs may not be as prone to attack as networks or servers, but

since they often contain sensitive data, such as credit card information, they are targeted

by system crackers. Workstations can also be co-opted without the user's knowledge and

used by attackers as "slave" machines in coordinated attacks. For these reasons, knowing

the vulnerabilities of a workstation can save users the headache of reinstalling the

operating system, or worse, recovering from data theft.

Bad Passwords

Bad passwords are one of the easiest ways for an attacker to gain access to a system.

For more on how to avoid common pitfalls when creating a password, see Section 4.1.1,

“Password Security”.

Vulnerable Client Applications

Although an administrator may have a fully secure and patched server, that does not

mean remote users are secure when accessing it. For instance, if the server offers

Telnet or FTP services over a public network, an attacker can capture the plain text

usernames and passwords as they pass over the network, and then use the account

information to access the remote user's workstation.

Even when using secure protocols, such as SSH, a remote user may be vulnerable to

certain attacks if they do not keep their client applications updated. For instance, v.1 SSH

clients are vulnerable to an X-forwarding attack from malicious SSH servers. Once

connected to the server, the attacker can quietly capture any keystrokes and mouse

clicks made by the client over the network. This problem was fixed in the v.2 SSH protocol,

but it is up to the user to keep track of what applications have such vulnerabilities and

update them as necessary.

Section 4.1, “Desktop Security” discusses in more detail what steps administrators and

home users should take to limit the vulnerability of computer workstations.

1.5. Common Exploits and Attacks

Table 1.1, “Common Exploits” details some of the most common exploits and entry points

used by intruders to access organizational network resources. Key to these common

exploits are the explanations of how they are performed and how administrators can

properly safeguard their network against such attacks.

Table 1.1. Common Explo it s

Exploit Descript io n Not es

Securit y Guide

12

Null or Default

Passwords

Leaving administrative passwords

blank or using a default password

set by the product vendor. This is

most common in hardware such

as routers and firewalls, but some

services that run on Linux can

contain default administrator

passwords as well (though

Red Hat Enterprise Linux 7 does

not ship with them).

Commonly associated with

networking hardware such as

routers, firewalls, VPNs, and

network attached storage (NAS)

appliances.

Common in many legacy operating

systems, especially those that

bundle services (such as UNIX

and Windows.)

Administrators sometimes create

privileged user accounts in a rush

and leave the password null,

creating a perfect entry point for

malicious users who discover the

account.

Default Shared

Keys

Secure services sometimes

package default security keys for

development or evaluation testing

purposes. If these keys are left

unchanged and are placed in a

production environment on the

Internet, all users with the same

default keys have access to that

shared-key resource, and any

sensitive information that it

contains.

Most common in wireless access

points and preconfigured secure

server appliances.

IP Spoofing A remote machine acts as a node

on your local network, finds

vulnerabilities with your servers,

and installs a backdoor program or

Trojan horse to gain control over

your network resources.

Spoofing is quite difficult as it

involves the attacker predicting

TCP/IP sequence numbers to

coordinate a connection to target

systems, but several tools are

available to assist crackers in

performing such a vulnerability.

Depends on target system

running services (such as rsh,

telnet, FTP and others) that use

source-based authentication

techniques, which are not

recommended when compared to

PKI or other forms of encrypted

authentication used in ssh or

SSL/TLS.

Exploit Descript io n Not es

Chapte r 1. O verview of Security T opics

13

Eavesdropping Collecting data that passes

between two active nodes on a

network by eavesdropping on the

connection between the two

nodes.

This type of attack works mostly

with plain text transmission

protocols such as Telnet, FTP, and

HTTP transfers.

Remote attacker must have

access to a compromised system

on a LAN in order to perform such

an attack; usually the cracker has

used an active attack (such as IP

spoofing or man-in-the-middle) to

compromise a system on the LAN.

Preventative measures include

services with cryptographic key

exchange, one-time passwords, or

encrypted authentication to

prevent password snooping;

strong encryption during

transmission is also advised.

Exploit Descript io n Not es

Securit y Guide

14

Service

Vulnerabilities

An attacker finds a flaw or

loophole in a service run over the

Internet; through this vulnerability,

the attacker compromises the

entire system and any data that it

may hold, and could possibly

compromise other systems on

the network.

HTTP-based services such as CGI

are vulnerable to remote

command execution and even

interactive shell access. Even if

the HTTP service runs as a non-

privileged user such as "nobody",

information such as configuration

files and network maps can be

read, or the attacker can start a

denial of service attack which

drains system resources or

renders it unavailable to other

users.

Services sometimes can have

vulnerabilities that go unnoticed

during development and testing;

these vulnerabilities (such as

buffer overflows, where attackers

crash a service using arbitrary

values that fill the memory buffer

of an application, giving the

attacker an interactive command

prompt from which they may

execute arbitrary commands) can

give complete administrative

control to an attacker.

Administrators should make sure

that services do not run as the

root user, and should stay vigilant

of patches and errata updates for

applications from vendors or

security organizations such as

CERT and CVE.

Exploit Descript io n Not es

Chapte r 1. O verview of Security T opics

15

Application

Vulnerabilities

Attackers find faults in desktop

and workstation applications (such

as email clients) and execute

arbitrary code, implant Trojan

horses for future compromise, or

crash systems. Further

exploitation can occur if the

compromised workstation has

administrative privileges on the

rest of the network.

Workstations and desktops are

more prone to exploitation as

workers do not have the

expertise or experience to

prevent or detect a compromise;

it is imperative to inform

individuals of the risks they are

taking when they install

unauthorized software or open

unsolicited email attachments.

Safeguards can be implemented

such that email client software

does not automatically open or

execute attachments. Additionally,

the automatic update of

workstation software using Red

Hat Network; or other system

management services can

alleviate the burdens of multi-seat

security deployments.

Denial of

Service (DoS)

Attacks

Attacker or group of attackers

coordinate against an

organization's network or server

resources by sending

unauthorized packets to the target

host (either server, router, or

workstation). This forces the

resource to become unavailable

to legitimate users.

The most reported DoS case in

the US occurred in 2000. Several

highly-trafficked commercial and

government sites were rendered

unavailable by a coordinated ping

flood attack using several

compromised systems with high

bandwidth connections acting as

zombies, or redirected broadcast

nodes.

Source packets are usually forged

(as well as rebroadcast), making

investigation as to the true source

of the attack difficult.

Advances in ingress filtering (IETF

rfc2267) using iptables and

Network Intrusion Detection

Systems such as snort assist

administrators in tracking down

and preventing distributed DoS

attacks.

Exploit Descript io n Not es

[1] http://www.sans.org/security-resources/mistakes.php

Securit y Guide

16

Chapter 2. Security Tips for Installation

Security begins with the first time you put that CD or DVD into your disk drive to install

Red Hat Enterprise Linux 7. Configuring your system securely from the beginning makes it

easier to implement additional security settings later.

2.1. Securing BIOS

Password protection for the BIOS (or BIOS equivalent) and the boot loader can prevent

unauthorized users who have physical access to systems from booting using removable

media or obtaining root privileges through single user mode. The security measures you

should take to protect against such attacks depends both on the sensitivity of the

information on the workstation and the location of the machine.

For example, if a machine is used in a trade show and contains no sensitive information,

then it may not be critical to prevent such attacks. However, if an employee's laptop with

private, unencrypted SSH keys for the corporate network is left unattended at that same

trade show, it could lead to a major security breach with ramifications for the entire

company.

If the workstation is located in a place where only authorized or trusted people have

access, however, then securing the BIOS or the boot loader may not be necessary.

2.1.1. BIOS Passwords

The two primary reasons for password protecting the BIOS of a computer are :

1. Preventing Changes to BIOS Settings — If an intruder has access to the BIOS, they

can set it to boot from a CD-ROM or a flash drive. This makes it possible for them to

enter rescue mode or single user mode, which in turn allows them to start arbitrary

processes on the system or copy sensitive data.

2. Preventing System Booting — Some BIOSes allow password protection of the boot

process. When activated, an attacker is forced to enter a password before the BIOS

launches the boot loader.

Because the methods for setting a BIOS password vary between computer

manufacturers, consult the computer's manual for specific instructions.

If you forget the BIOS password, it can either be reset with jumpers on the motherboard

or by disconnecting the CMOS battery. For this reason, it is good practice to lock the

computer case if possible. However, consult the manual for the computer or motherboard

before attempting to disconnect the CMOS battery.

2.1.1.1. Securing Non-BIOS-based Systems

Other systems and architectures use different programs to perform low-level tasks

roughly equivalent to those of the BIOS on x86 systems. For example, the Unified

Extensible Firmware Interface (UEFI) shell.

For instructions on password protecting BIOS-like programs, see the manufacturer's

instructions.

2.2. Partitioning the Disk

[2]

Chapte r 2. Security T ips f or Installation

17

Red Hat recommends creating separate partitions for the /boot, /, /home/tmp, and

/var/tmp/ directories. The reasons for each are different, and we will address each

partition.

/boot

This partition is the first partition that is read by the system during boot up. The

boot loader and kernel images that are used to boot your system into Red Hat

Enterprise Linux 7 are stored in this partition. This partition should not be

encrypted. If this partition is included in / and that partition is encrypted or

otherwise becomes unavailable then your system will not be able to boot.

/home

When user data (/home) is stored in / instead of in a separate partition, the

partition can fill up causing the operating system to become unstable. Also, when

upgrading your system to the next version of Red Hat Enterprise Linux 7 it is a lot

easier when you can keep your data in the /home partition as it will not be

overwritten during installation. If the root partition (/) becomes corrupt your data

could be lost forever. By using a separate partition there is slightly more

protection against data loss. You can also target this partition for frequent

backups.

/tmp and /var/tmp/

Both the /tmp and /var/tmp/ directories are used to store data that does not

need to be stored for a long period of time. However, if a lot of data floods one of

these directories it can consume all of your storage space. If this happens and

these directories are stored within / then your system could become unstable

and crash. For this reason, moving these directories into their own partitions is a

good idea.

Note

During the installation process, an option to encrypt partitions is presented to you.

The user must supply a passphrase. This passphrase will be used as a key to

unlock the bulk encryption key, which is used to secure the partition's data. For more

information on LUKS, see Section 4.10.1, “Using LUKS Disk Encryption”.

2.3. Installing the Minimum Amount of Packages Required

It is best practice to install only the packages you will use because each piece of software

on your computer could possibly contain a vulnerability. If you are installing from the DVD

media, take the opportunity to select exactly what packages you want to install during the

installation. If you find you need another package, you can always add it to the system

later.

For more information about installing the Minimal install environment, see the

Software Selection chapter of the Red Hat Enterprise Linux 7 Installation Guide. A minimal

installation can also be performed by a Kickstart file using the --nobase option. For more

information about Kickstart installations, see the Package Selection section from the

Red Hat Enterprise Linux 7 Installation Guide.

Securit y Guide

18

2.4. Restricting Network Connectivity During the

Installation Process

When installing Red Hat Enterprise Linux, the installation medium represents a snapshot

of the system at a particular time. Because of this, it may not be up-to-date with the latest

security fixes and may be vulnerable to certain issues that were fixed only after the

system provided by the installation medium was released.

When installing a potentially vulnerable operating system, always limit exposure only to

the closest necessary network zone. The safest choice is the “no network” zone, which

means to leave your machine disconnected during the installation process. In some

cases, a LAN or intranet connection is sufficient while the Internet connection is the

riskiest. To follow the best security practices, choose the closest zone with your

repository while installing Red Hat Enterprise Linux from a network.

For more information about configuring network connectivity, see the Network & Hostname

chapter of the Red Hat Enterprise Linux 7 Installation Guide.

2.5. Post-installation Procedures

The following steps are the security-related procedures that should be performed

immediately after installation of Red Hat Enterprise Linux.

1. Update your system. enter the following command as root:

~]# yum update

2. Even though the firewall service, firewalld, is automatically enabled with the

installation of Red Hat Enterprise Linux, there are scenarios where it might be

explicitly disabled, for example in the kickstart configuration. In such a case, it is

recommended to consider re-enabling the firewall.

To start firewalld enter the following commands as root:

~]# systemctl start firewalld

~]# systemctl enable firewalld

3. To enhance security, disable services you do not need. For example, if there are

no printers installed on your computer, disable the cups service using the following

command:

~]# systemctl disable cups

To review active services, enter the following command:

~]$ systemctl list-units | grep service

2.6. Additional Resources

For more information about installation in general, see the Red Hat Enterprise Linux 7

Installation Guide.

Chapte r 2. Security T ips f or Installation

19

Chapter 3. Keeping Your System Up-to-Date

This chapter describes the process of keeping your system up-to-date, which involves

planning and configuring the way security updates are installed, applying changes

introduced by newly updated packages, and using the Red Hat Customer Portal for keeping

track of security advisories.

3.1. Maintaining Installed Software

As security vulnerabilities are discovered, the affected software must be updated in order

to limit any potential security risks. If the software is a part of a package within a Red Hat

Enterprise Linux distribution that is currently supported, Red Hat is committed to releasing

updated packages that fix the vulnerabilities as soon as possible.

Often, announcements about a given security exploit are accompanied with a patch (or

source code) that fixes the problem. This patch is then applied to the Red Hat

Enterprise Linux package and tested and released as an erratum update. However, if an

announcement does not include a patch, Red Hat developers first work with the maintainer

of the software to fix the problem. Once the problem is fixed, the package is tested and

released as an erratum update.

If an erratum update is released for software used on your system, it is highly

recommended that you update the affected packages as soon as possible to minimize the

amount of time the system is potentially vulnerable.

3.1.1. Planning and Configuring Securit y Updates

All software contains bugs. Often, these bugs can result in a vulnerability that can expose

your system to malicious users. Packages that have not been updated are a common

cause of computer intrusions. Implement a plan for installing security patches in a timely

manner to quickly eliminate discovered vulnerabilities, so they cannot be exploited.

Test security updates when they become available and schedule them for installation.

Additional controls need to be used to protect the system during the time between the

release of the update and its installation on the system. These controls depend on the

exact vulnerability, but may include additional firewall rules, the use of external firewalls,

or changes in software settings.

Bugs in supported packages are fixed using the errata mechanism. An erratum consists of

one or more RPM packages accompanied by a brief explanation of the problem that the

particular erratum deals with. All errata are distributed to customers with active

subscriptions through the Red Hat Subscript io n Management service. Errata that

address security issues are called Red Hat Security Advisories.

For more information on working with security errata, see Section 3.2.1, “Viewing Security

Advisories on the Customer Portal”. For detailed information about the Red Hat

Subscript ion Management service, including instructions on how to migrate from RHN

Classic, see the documentation related to this service: Red Hat Subscription Management.

3.1.1.1. Using the Security Features of Yum

The Yum package manager includes several security-related features that can be used to

search, list, display, and install security errata. These features also make it possible to

use Yum to install nothing but security updates.

Chapte r 3. Kee ping Your System Up-to-Dat e

21

To check for security-related updates available for your system, enter the following

command as root:

~]# yum check-update --security

Loaded plugins: langpacks, product-id, subscription-manager

rhel-7-workstation-rpms/x86_64 | 3.4 kB 00:00:00

No packages needed for security; 0 packages available

Note that the above command runs in a non-interactive mode, so it can be used in scripts

for automated checking whether there are any updates available. The command returns

an exit value of 100 when there are any security updates available and 0 when there are

not. On encountering an error, it returns 1.

Analogously, use the following command to only install security-related updates:

~]# yum update --security

Use the updateinfo subcommand to display or act upon information provided by

repositories about available updates. The updateinfo subcommand itself accepts a

number of commands, some of which pertain to security-related uses. See Table 3.1,

“Security-related commands usable with yum updateinfo” for an overview of these

commands.

Table 3.1. Securit y-related commands usable wit h yum updat einf o

Command Descript io n

advisory [advisories] Displays information about one or more advisories.

Replace advisories with an advisory number or

numbers.

cves Displays the subset of information that pertains to CVE

(Common Vulnerabilities and Exposures).

security or sec Displays all security-related information.

severity [severity_level]

or sev [severity_level]

Displays information about security-relevant packages

of the supplied severity_level.

3.1.2. Updat ing and Inst alling Packages

When updating software on a system, it is important to download the update from a

trusted source. An attacker can easily rebuild a package with the same version number as

the one that is supposed to fix the problem but with a different security exploit and

release it on the Internet. If this happens, using security measures, such as verifying files

against the original RPM, does not detect the exploit. Thus, it is very important to only

download RPMs from trusted sources, such as from Red Hat, and to check the package

signatures to verify their integrity.

See the Yum chapter of the Red Hat Enterprise Linux 7 System Administrator's Guide for

detailed information on how to use the Yum package manager.

3.1.2.1. Verif ying Signed Packages

All Red Hat Enterprise Linux packages are signed with the Red Hat GPG key. GPG stands

for GNU Privacy Guard, or GnuPG, a free software package used for ensuring the

authenticity of distributed files. If the verification of a package signature fails, the package

may be altered and therefore cannot be trusted.

Securit y Guide

22

The Yum package manager allows for an automatic verification of all packages it installs or

upgrades. This feature is enabled by default. To configure this option on your system,

make sure the gpgcheck configuration directive is set to 1 in the /etc/yum.conf

configuration file.

Use the following command to manually verify package files on your filesystem:

rpmkeys --checksig package_file.rpm

See the Product Signing (GPG) Keys article on the Red Hat Customer Portal for additional

information about Red Hat package-signing practices.

3.1.2.2. Installing Signed Packages

To install verified packages (see Section 3.1.2.1, “Verifying Signed Packages” for

information on how to verify packages) from your filesystem, use the yum install

command as the root user as follows:

yum install package_file.rpm

Use a shell glob to install several packages at once. For example, the following commands

installs all .rpm packages in the current directory:

yum install *.rpm

Important

Before installing any security errata, be sure to read any special instructions

contained in the erratum report and execute them accordingly. See Section 3.1.3,

“Applying Changes Introduced by Installed Updates” for general instructions about

applying changes made by errata updates.

3.1.3. Applying Changes Introduced by Installed Updat es

After downloading and installing security errata and updates, it is important to halt the

usage of the old software and begin using the new software. How this is done depends on

the type of software that has been updated. The following list itemizes the general

categories of software and provides instructions for using updated versions after a

package upgrade.

Note

In general, rebooting the system is the surest way to ensure that the latest version

of a software package is used; however, this option is not always required, nor is it

always available to the system administrator.

Applicat ions

Chapte r 3. Kee ping Your System Up-to-Dat e

23

User-space applications are any programs that can be initiated by the user.

Typically, such applications are used only when the user, a script, or an

automated task utility launch them.

Once such a user-space application is updated, halt any instances of the

application on the system, and launch the program again to use the updated

version.

Kernel

The kernel is the core software component for the Red Hat Enterprise Linux 7

operating system. It manages access to memory, the processor, and peripherals,

and it schedules all tasks.

Because of its central role, the kernel cannot be restarted without also rebooting

the computer. Therefore, an updated version of the kernel cannot be used until

the system is rebooted.

KVM

When the qemu-kvm and libvirt packages are updated, it is necessary to stop all

guest virtual machines, reload relevant virtualization modules (or reboot the host

system), and restart the virtual machines.

Use the lsmod command to determine which modules from the following are

loaded: kvm, kvm-intel, or kvm-amd. Then use the modprobe -r command to

remove and subsequently the modprobe -a command to reload the affected

modules. Fox example:

~]# lsmod | grep kvm

kvm_intel 143031 0

kvm 460181 1 kvm_intel

~]# modprobe -r kvm-intel

~]# modprobe -r kvm

~]# modprobe -a kvm kvm-intel

Shared Libraries

Shared libraries are units of code, such as glibc, that are used by a number of

applications and services. Applications utilizing a shared library typically load the

shared code when the application is initialized, so any applications using an

updated library must be halted and relaunched.

To determine which running applications link against a particular library, use the

lsof command:

lsof library

For example, to determine which running applications link against the

libwrap.so.0 library, type:

~]# lsof /lib64/libwrap.so.0

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

pulseaudi 12363 test mem REG 253,0 42520 34121785

/usr/lib64/libwrap.so.0.7.6

Securit y Guide

24

gnome-set 12365 test mem REG 253,0 42520 34121785

/usr/lib64/libwrap.so.0.7.6

gnome-she 12454 test mem REG 253,0 42520 34121785

/usr/lib64/libwrap.so.0.7.6

This command returns a list of all the running programs that use TCP wrappers for

host-access control. Therefore, any program listed must be halted and

relaunched when the tcp_wrappers package is updated.

syst emd Services

systemd services are persistent server programs usually launched during the

boot process. Examples of systemd services include sshd or vsftpd.

Because these programs usually persist in memory as long as a machine is

running, each updated systemd service must be halted and relaunched after its

package is upgraded. This can be done as the root user using the systemctl

command:

systemctl restart service_name

Replace service_name with the name of the service you want to restart, such as

sshd.

Ot her Sof t ware

Follow the instructions outlined by the resources linked below to correctly update

the following applications.

Red Hat Directory Server — See the Release Notes for the version of the

Red Hat Directory Server in question at

https://access.redhat.com/site/documentation/en-

US/Red_Hat_Directory_Server/.

Red Hat Ent erprise Virt ualizat ion Manager — See the Installation Guide

for the version of the Red Hat Enterprise Virtualization in question at

https://access.redhat.com/site/documentation/en-

US/Red_Hat_Enterprise_Virtualization/.

3.2. Using the Red Hat Customer Portal

The Red Hat Customer Portal at https://access.redhat.com/ is the main customer-oriented

resource for official information related to Red Hat products. You can use it to find

documentation, manage your subscriptions, download products and updates, open support

cases, and learn about security updates.

3.2.1. Viewing Security Advisories on the Customer Portal

To view security advisories (errata) relevant to the systems for which you have active

subscriptions, log into the Customer Portal at https://access.redhat.com/ and click on the

Download Products & Updates button on the main page. When you enter the Software

& Download Center page, continue by clicking on the Errata button to see a list of

advisories pertinent to your registered systems.

To browse a list of all security updates for all active Red Hat products, go to Securit y →

Securit y Updat es → Active Pro duct s using the navigation menu at the top of the page.

Chapte r 3. Kee ping Your System Up-to-Dat e

25

Click on the erratum code in the left part of the table to display more detailed information

about the individual advisories. The next page contains not only a description of the given

erratum, including its causes, consequences, and required fixes, but also a list of all

packages that the particular erratum updates along with instructions on how to apply the

updates. The page also includes links to relevant references, such as related CVE.

3.2.2. Navigat ing CVE Customer Port al Pages

The CVE (Common Vulnerabilities and Exposures) project, maintained by

The MITRE Corporation, is a list of standardized names for vulnerabilities and security

exposures. To browse a list of CVE that pertain to Red Hat products on the Customer

Portal, log into your account at https://access.redhat.com/ and navigate to Securit y →

Resources → CVE Dat abase using the navigation menu at the top of the page.

Click on the CVE code in the left part of the table to display more detailed information

about the individual vulnerabilities. The next page contains not only a description of the

given CVE but also a list of affected Red Hat products along with links to relevant Red Hat

errata.

3.2.3. Underst anding Issue Severity Classification

All security issues discovered in Red Hat products are assigned an impact rating by

Red Hat Product Security according to the severity of the problem. The four-point scale

consists of the following levels: Low, Moderate, Important, and Critical. In addition to that,

every security issue is rated using the Common Vulnerability Scoring System (CVSS) base

scores.

Together, these ratings help you understand the impact of security issues, allowing you to