System Administrator's Guide Red Hat Enterprise Linux 7 Administrators En US

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 569 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Table of Contents

- Part I. Basic System Configuration

- Chapter 1. System Locale and Keyboard Configuration

- Chapter 2. Configuring the Date and Time

- Chapter 3. Managing Users and Groups

- Chapter 4. Access Control Lists

- Chapter 5. Gaining Privileges

- Part II. Subscription and Support

- Chapter 6. Registering the System and Managing Subscriptions

- Chapter 7. Accessing Support Using the Red Hat Support Tool

- 7.1. Installing the Red Hat Support Tool

- 7.2. Registering the Red Hat Support Tool Using the Command Line

- 7.3. Using the Red Hat Support Tool in Interactive Shell Mode

- 7.4. Configuring the Red Hat Support Tool

- 7.5. Opening and Updating Support Cases Using Interactive Mode

- 7.6. Viewing Support Cases on the Command Line

- 7.7. Additional Resources

- Part III. Installing and Managing Software

- Chapter 8. Yum

- 8.1. Checking For and Updating Packages

- 8.2. Working with Packages

- 8.3. Working with Package Groups

- 8.4. Working with Transaction History

- 8.5. Configuring Yum and Yum Repositories

- 8.6. Yum Plug-ins

- 8.7. Additional Resources

- Part IV. Infrastructure Services

- Chapter 9. Managing Services with systemd

- 9.1. Introduction to systemd

- 9.2. Managing System Services

- 9.3. Working with systemd Targets

- 9.4. Shutting Down, Suspending, and Hibernating the System

- 9.5. Controlling systemd on a Remote Machine

- 9.6. Creating and Modifying systemd Unit Files

- 9.7. Additional Resources

- Chapter 10. OpenSSH

- Chapter 11. TigerVNC

- Part V. Servers

- Chapter 12. Web Servers

- 12.1. The Apache HTTP Server

- 12.1.1. Notable Changes

- 12.1.2. Updating the Configuration

- 12.1.3. Running the httpd Service

- 12.1.4. Editing the Configuration Files

- 12.1.5. Working with Modules

- 12.1.6. Setting Up Virtual Hosts

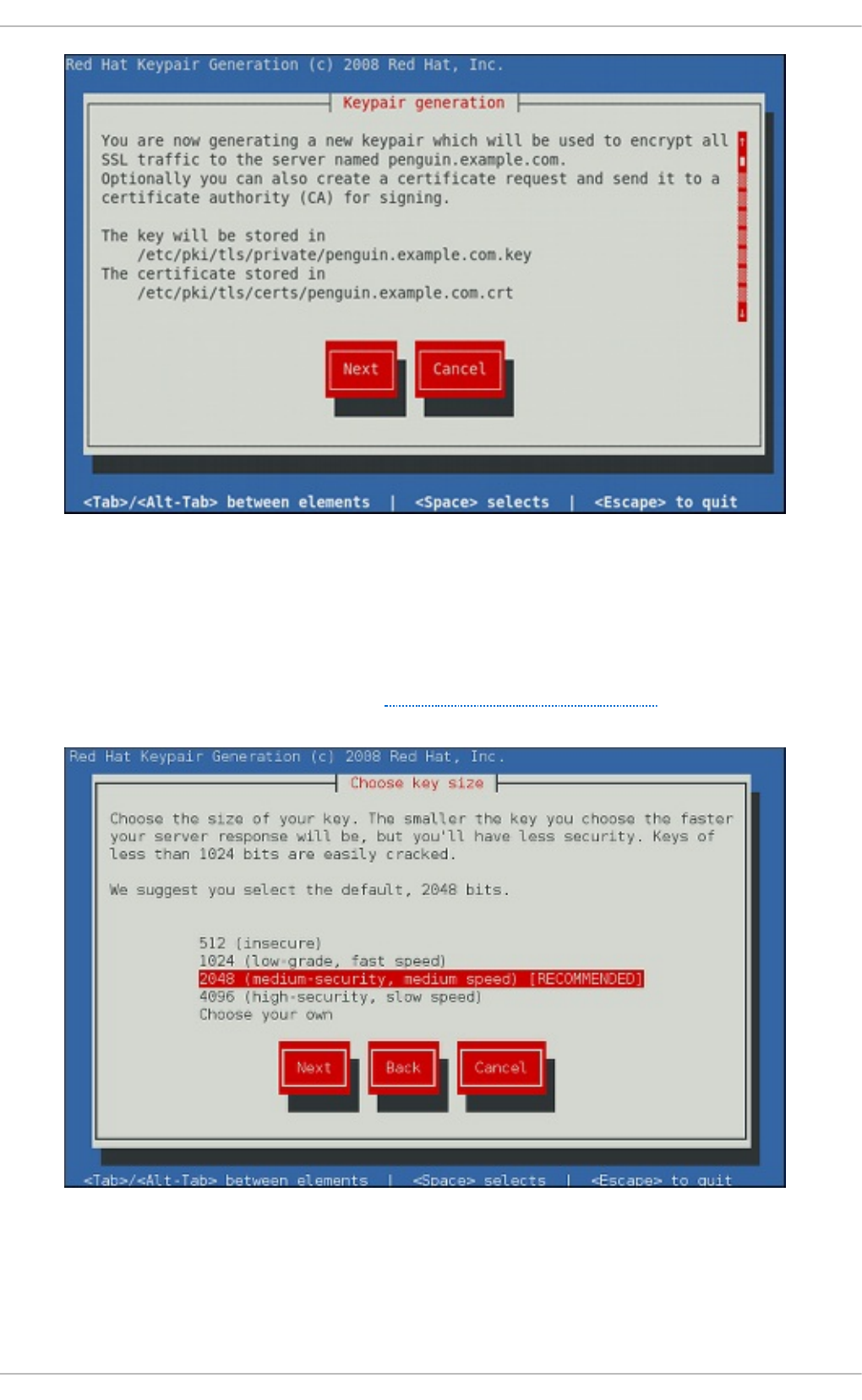

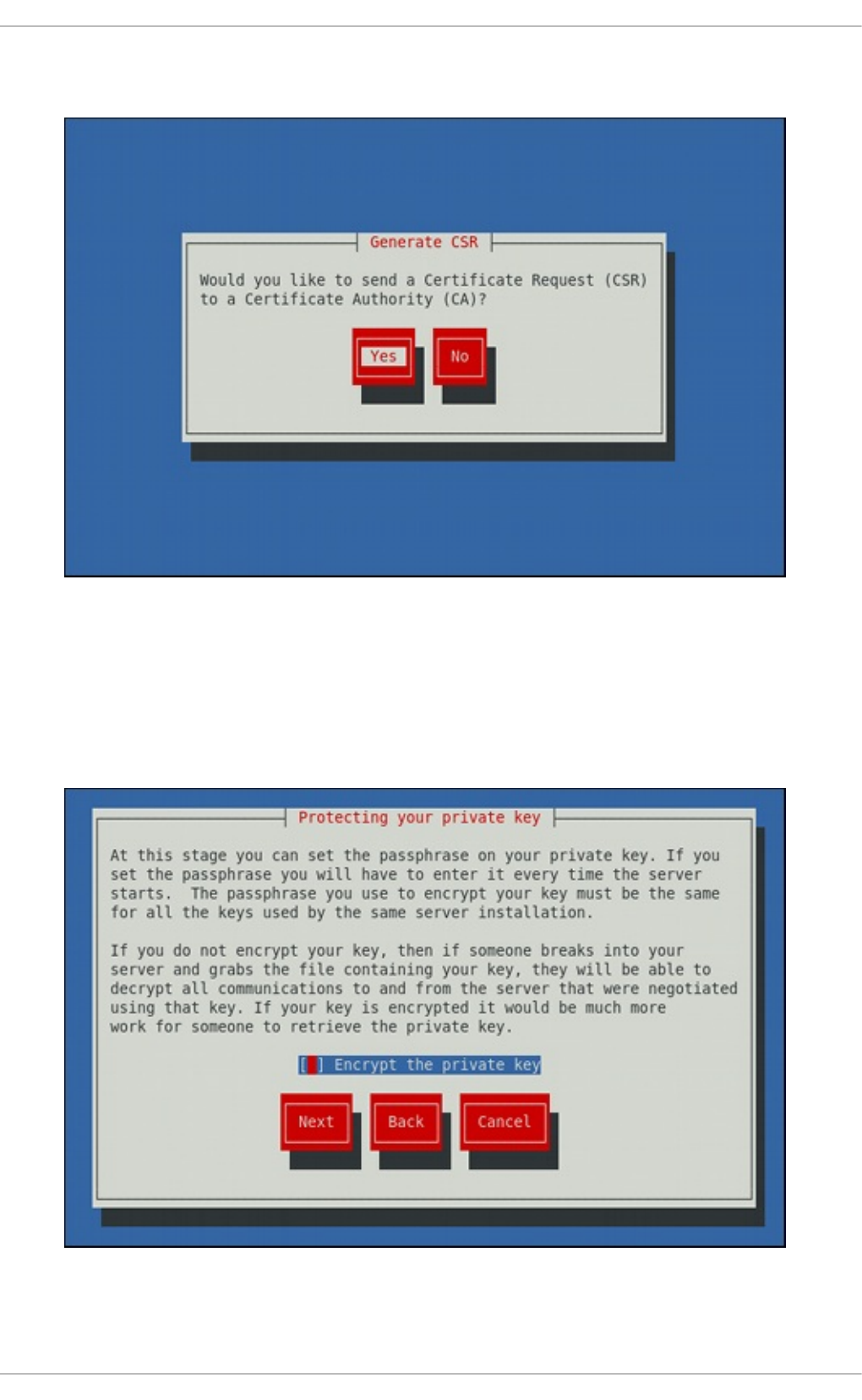

- 12.1.7. Setting Up an SSL Server

- 12.1.8. Enabling the mod_ssl Module

- 12.1.9. Enabling the mod_nss Module

- 12.1.10. Using an Existing Key and Certificate

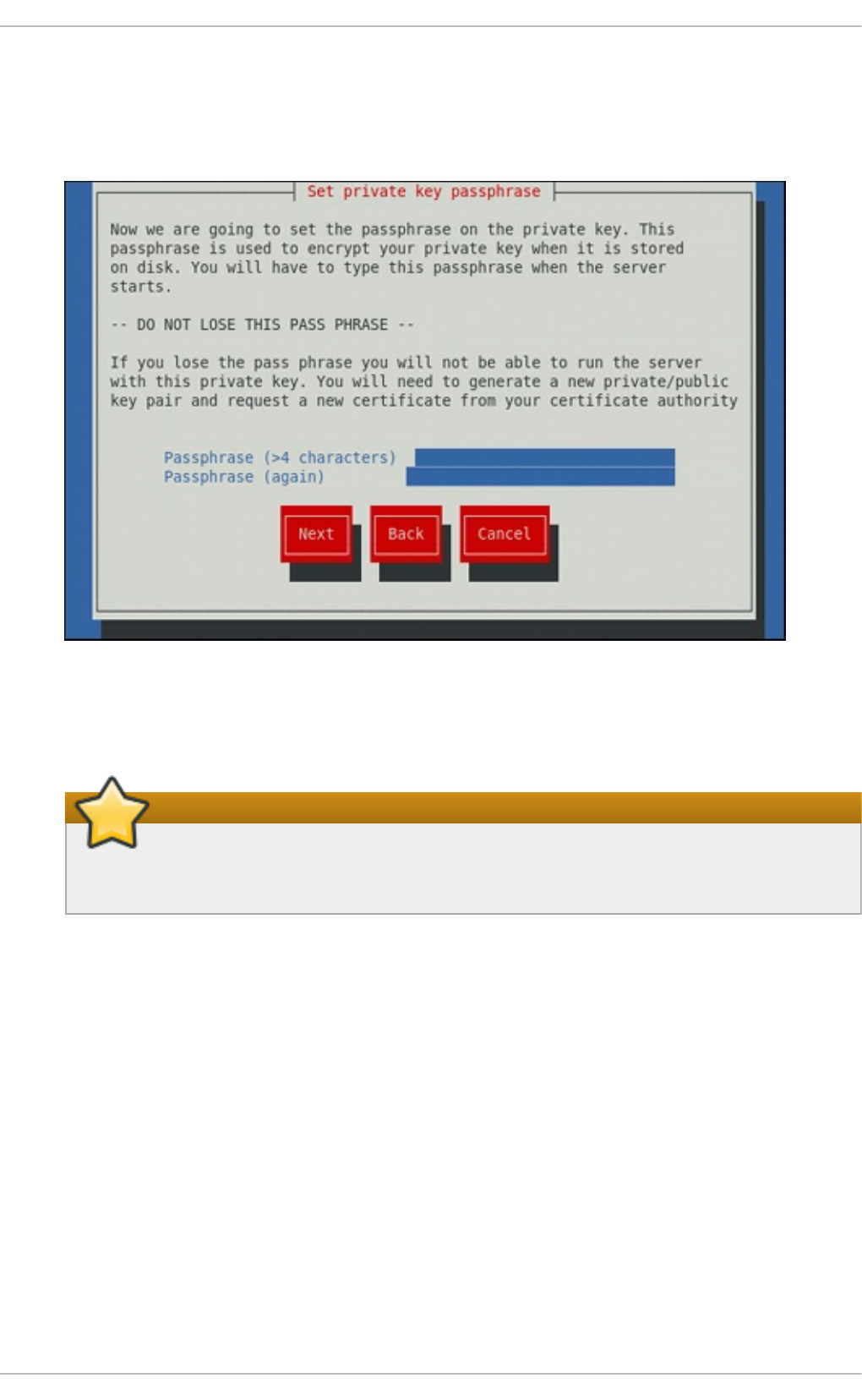

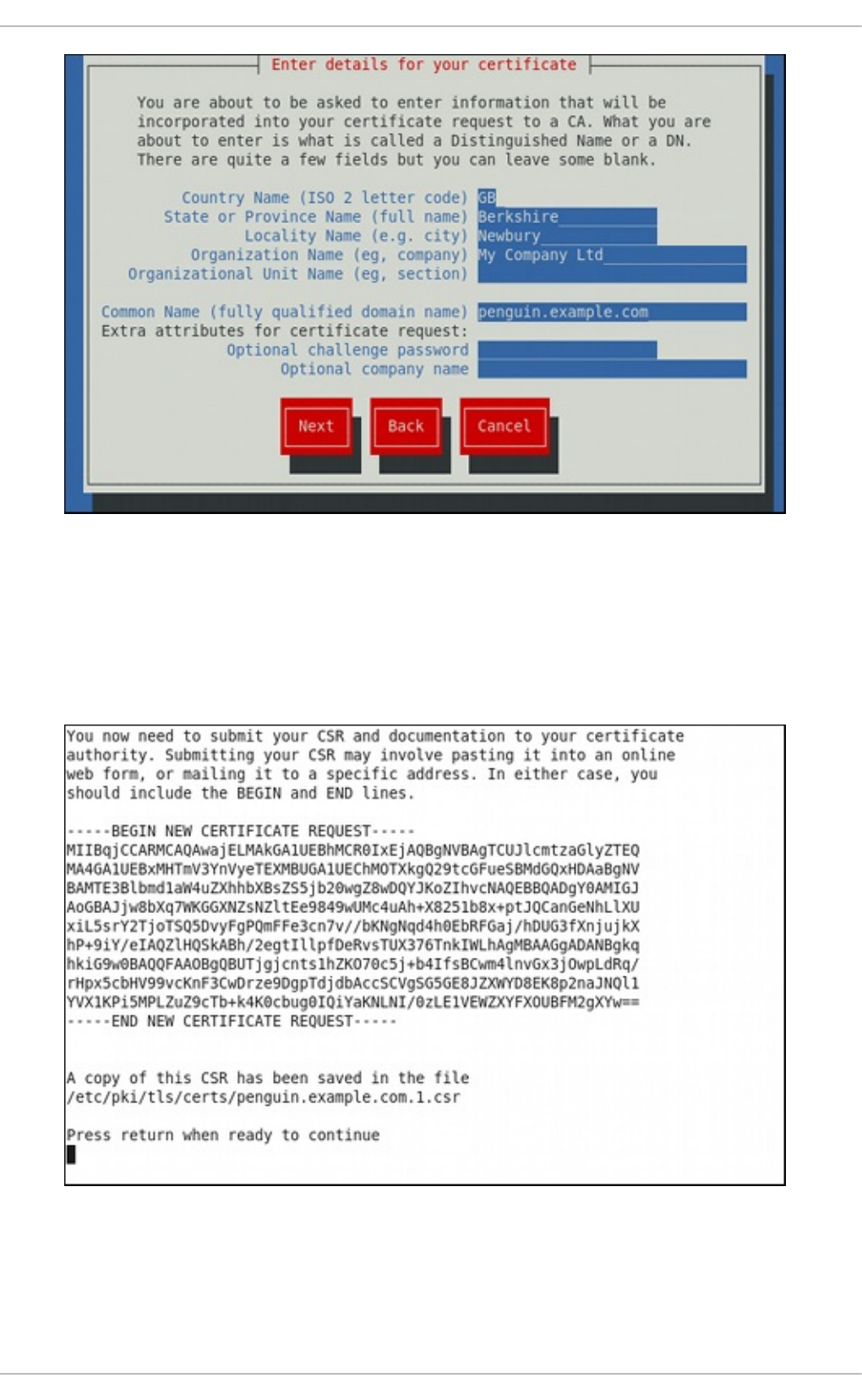

- 12.1.11. Generating a New Key and Certificate

- 12.1.12. Configure the Firewall for HTTP and HTTPS Using the Command Line

- 12.1.13. Additional Resources

- 12.1. The Apache HTTP Server

- Chapter 13. Mail Servers

- Chapter 14. File and Print Servers

- 14.1. Samba

- 14.1.1. Introduction to Samba

- 14.1.2. Samba Daemons and Related Services

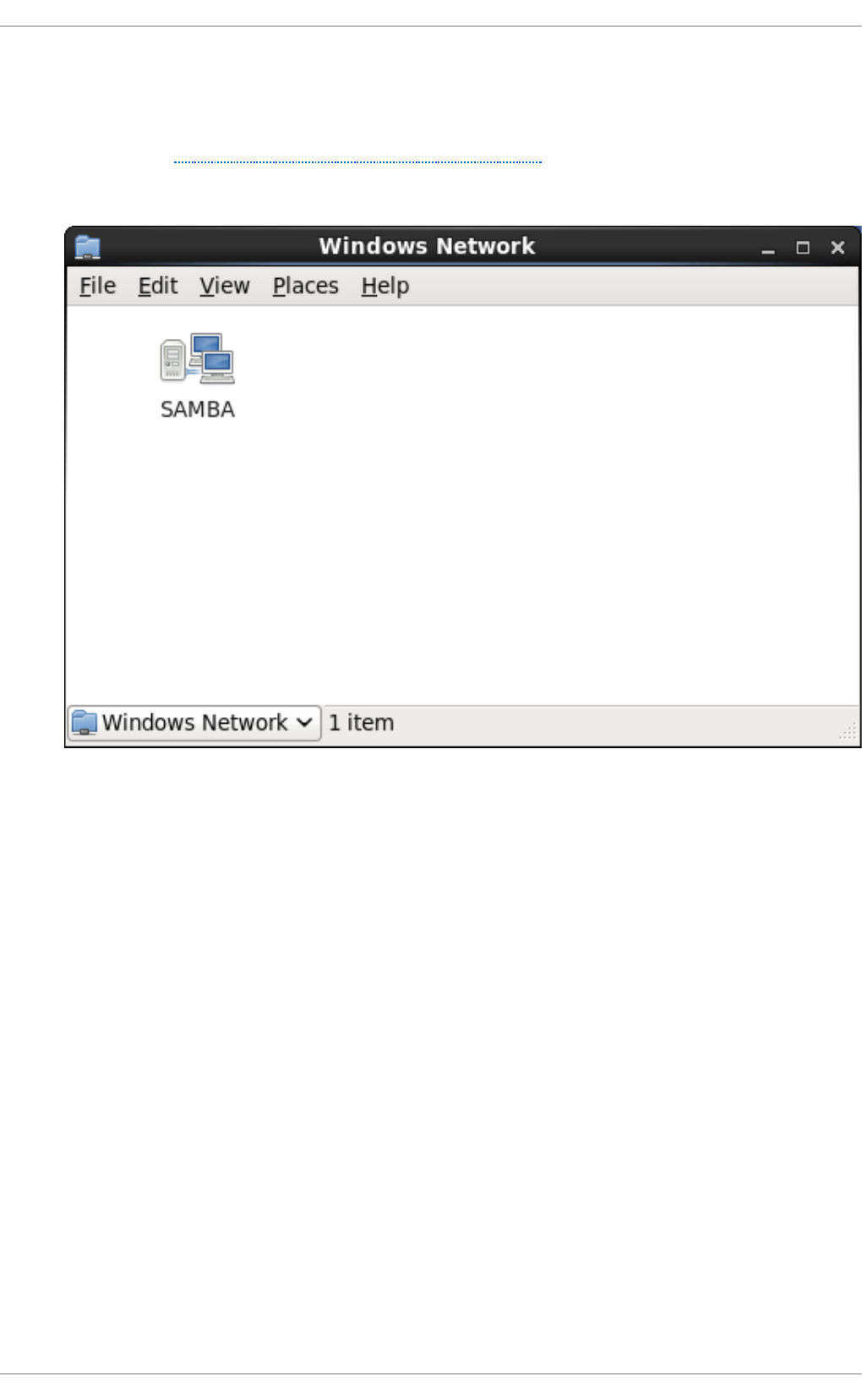

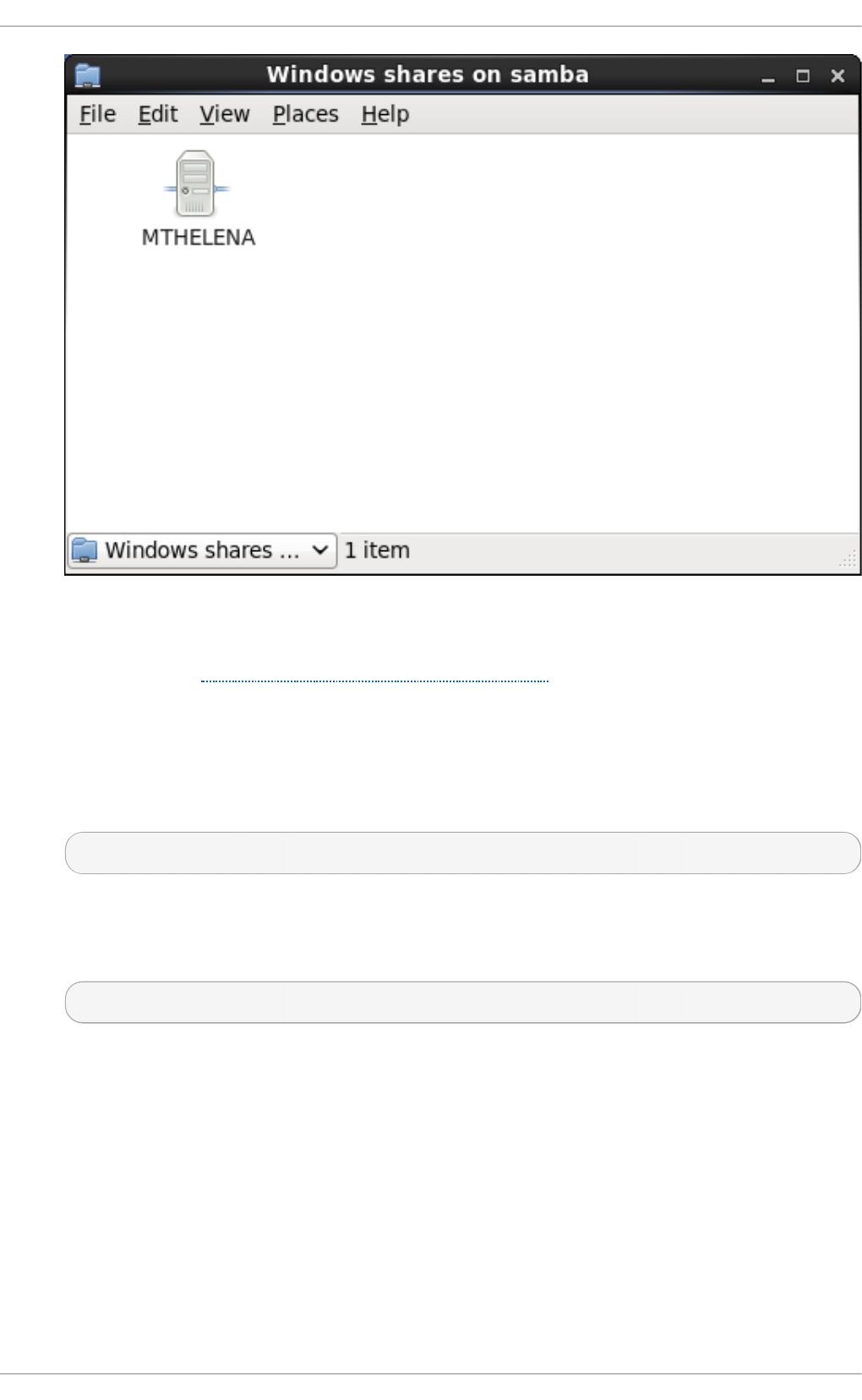

- 14.1.3. Connecting to a Samba Share

- 14.1.4. Mounting the Share

- 14.1.5. Configuring a Samba Server

- 14.1.6. Starting and Stopping Samba

- 14.1.7. Samba Security Modes

- 14.1.8. Samba Network Browsing

- 14.1.9. Samba Distribution Programs

- 14.1.10. Additional Resources

- 14.2. FTP

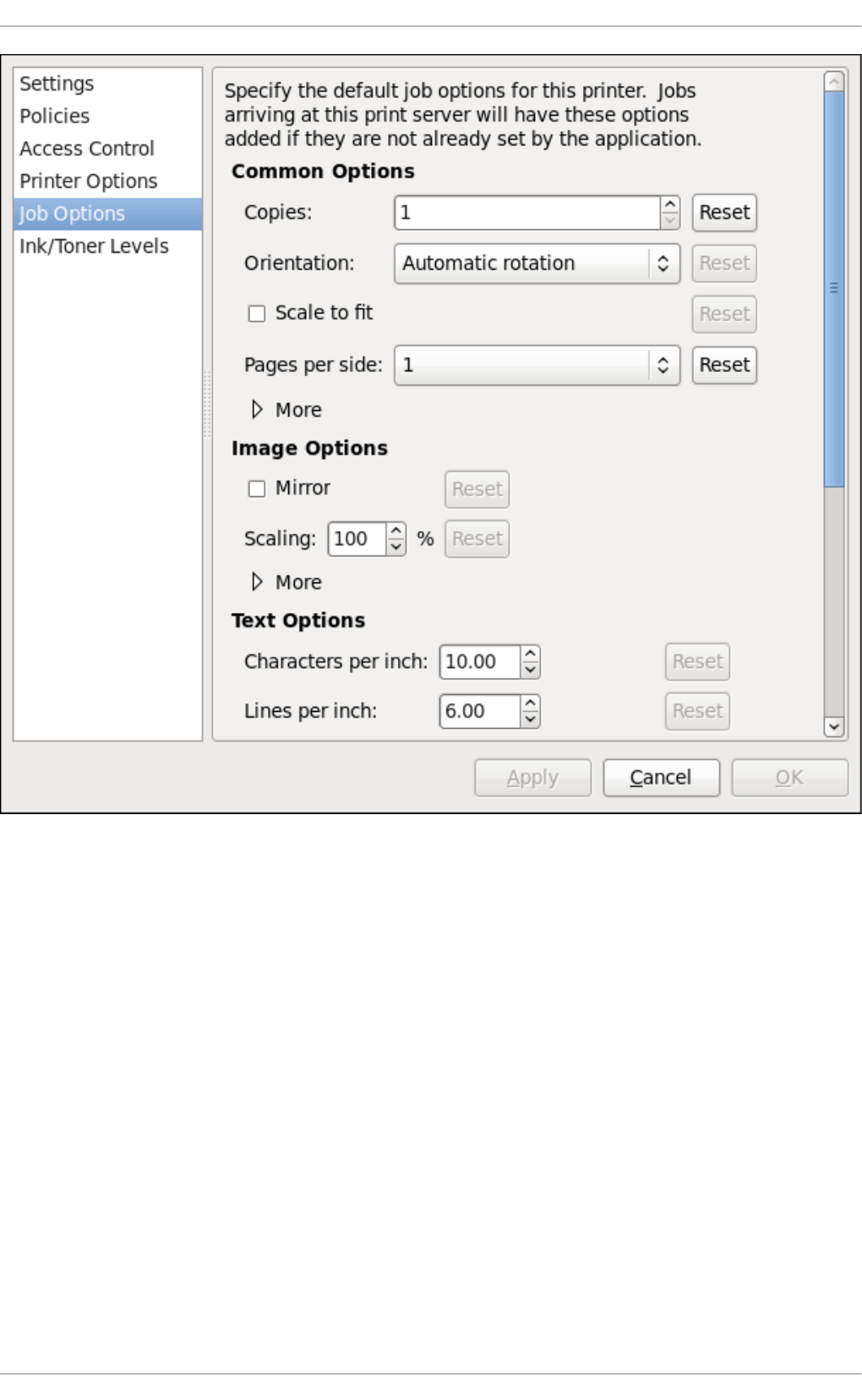

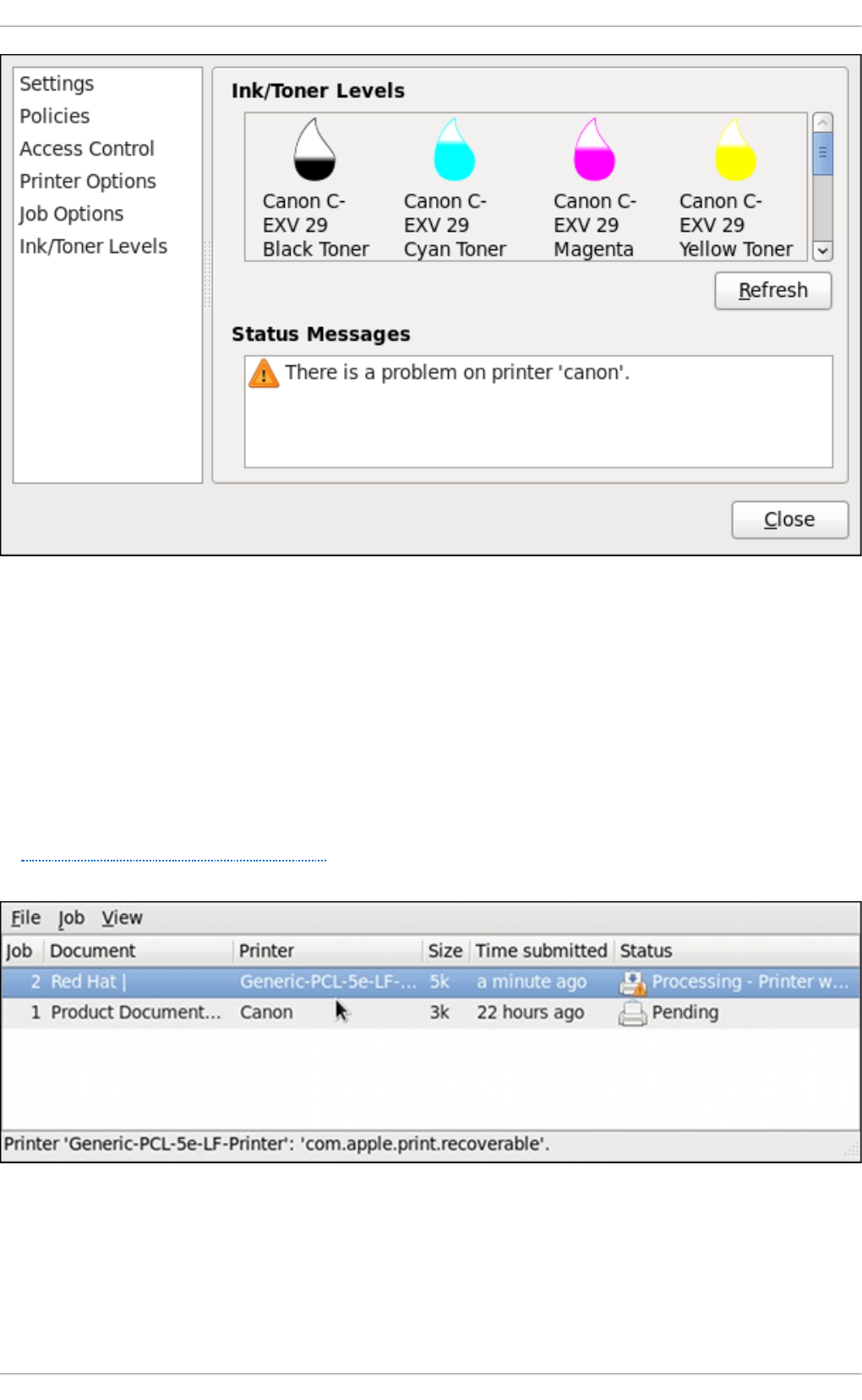

- 14.3. Print Settings

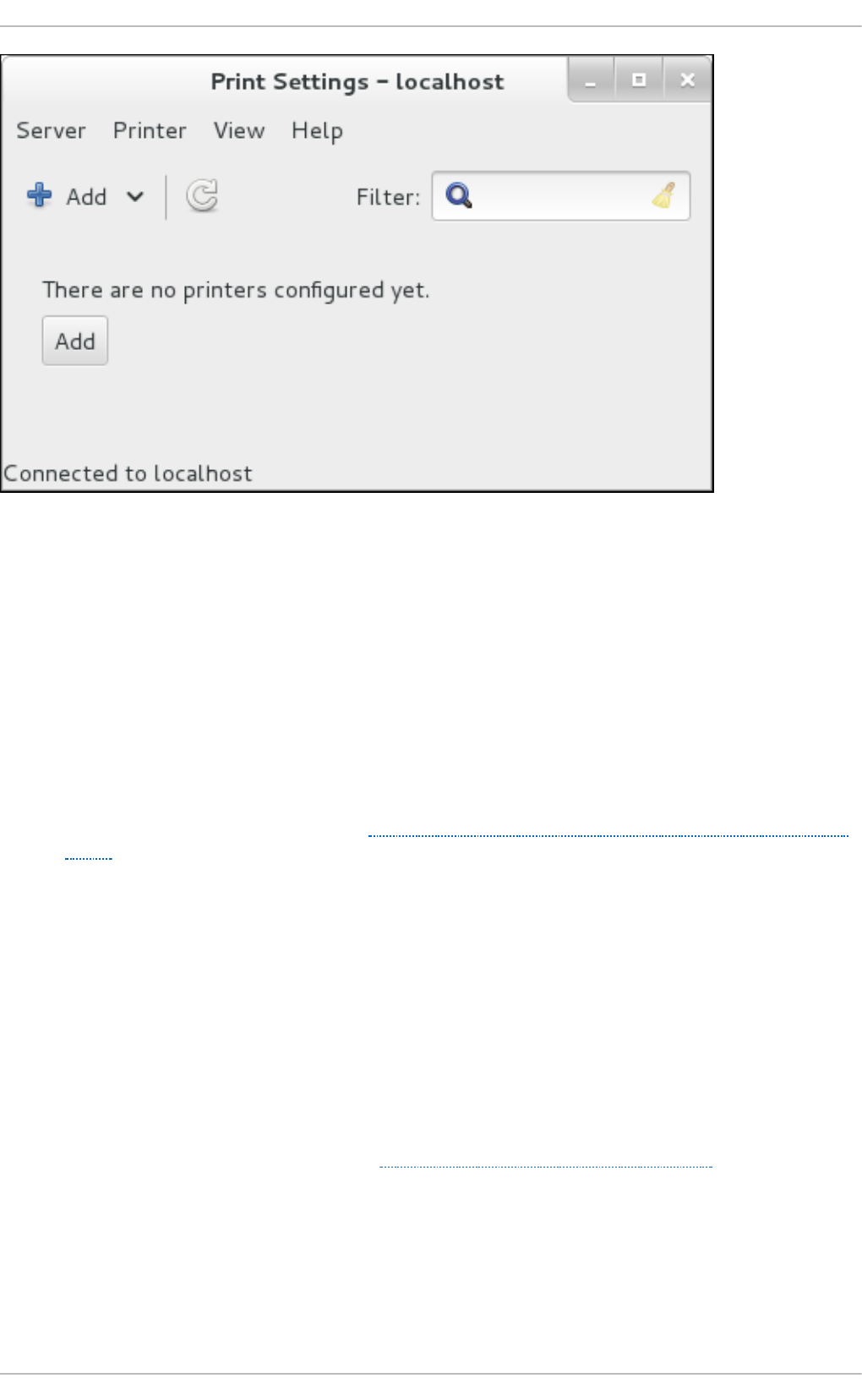

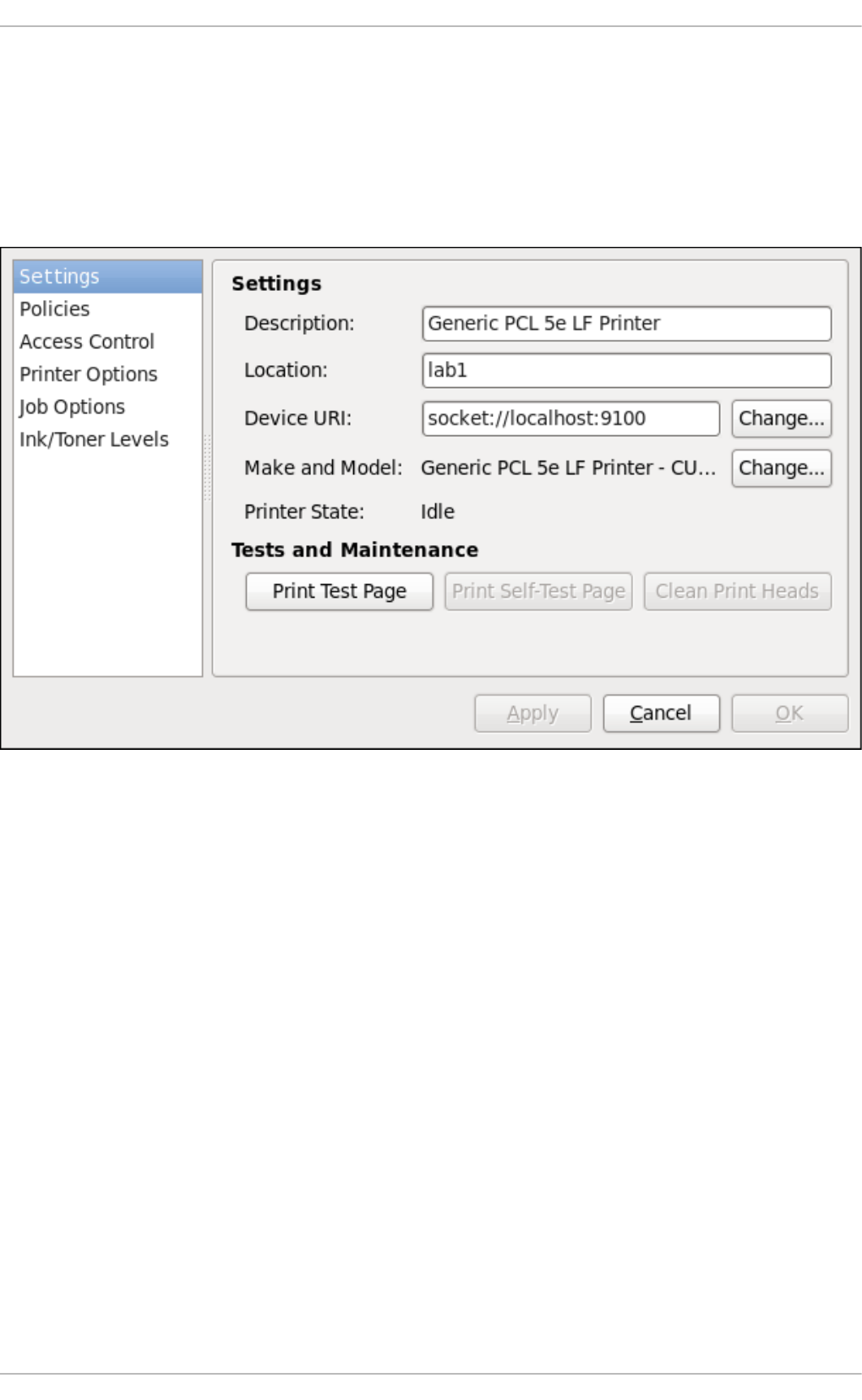

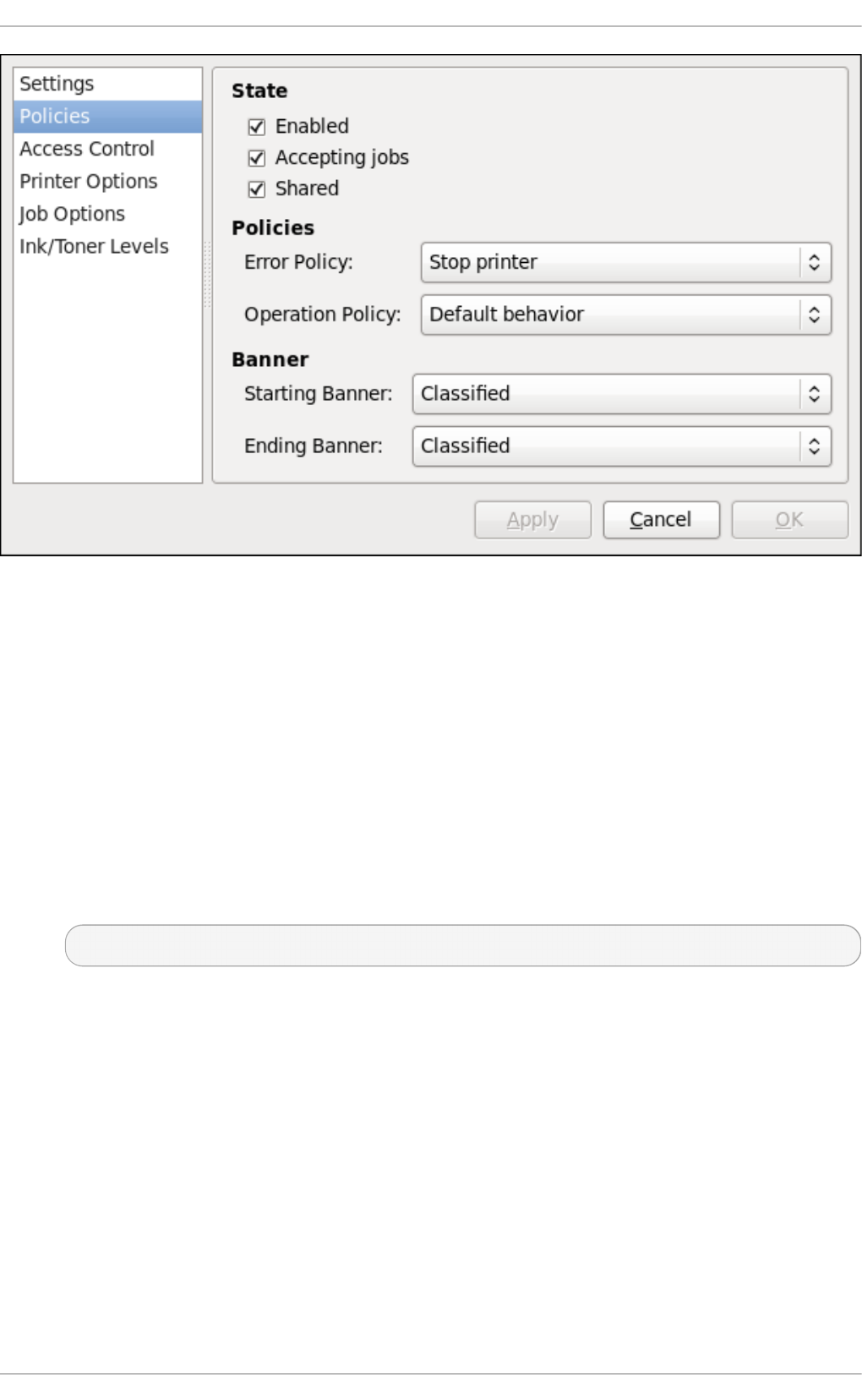

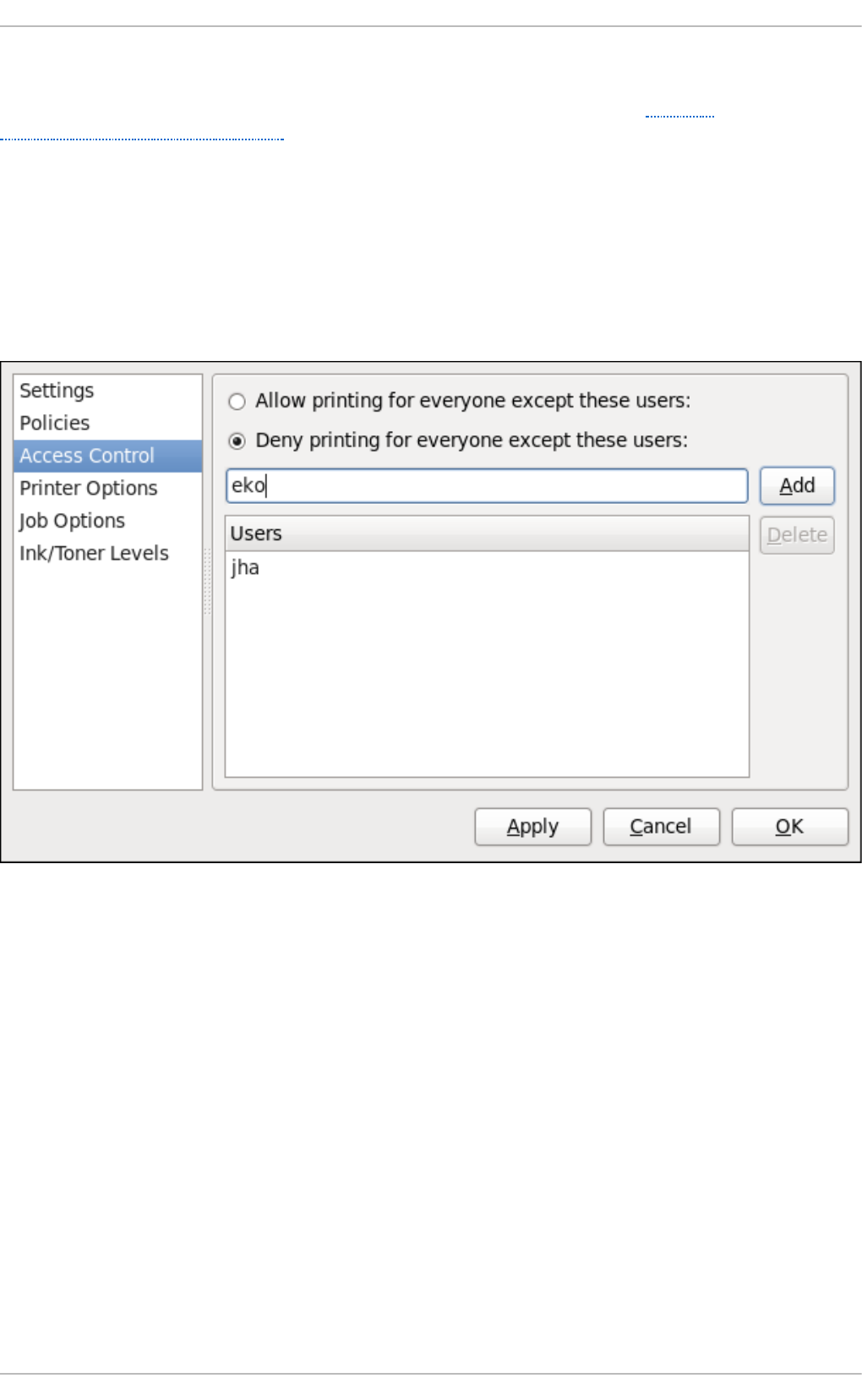

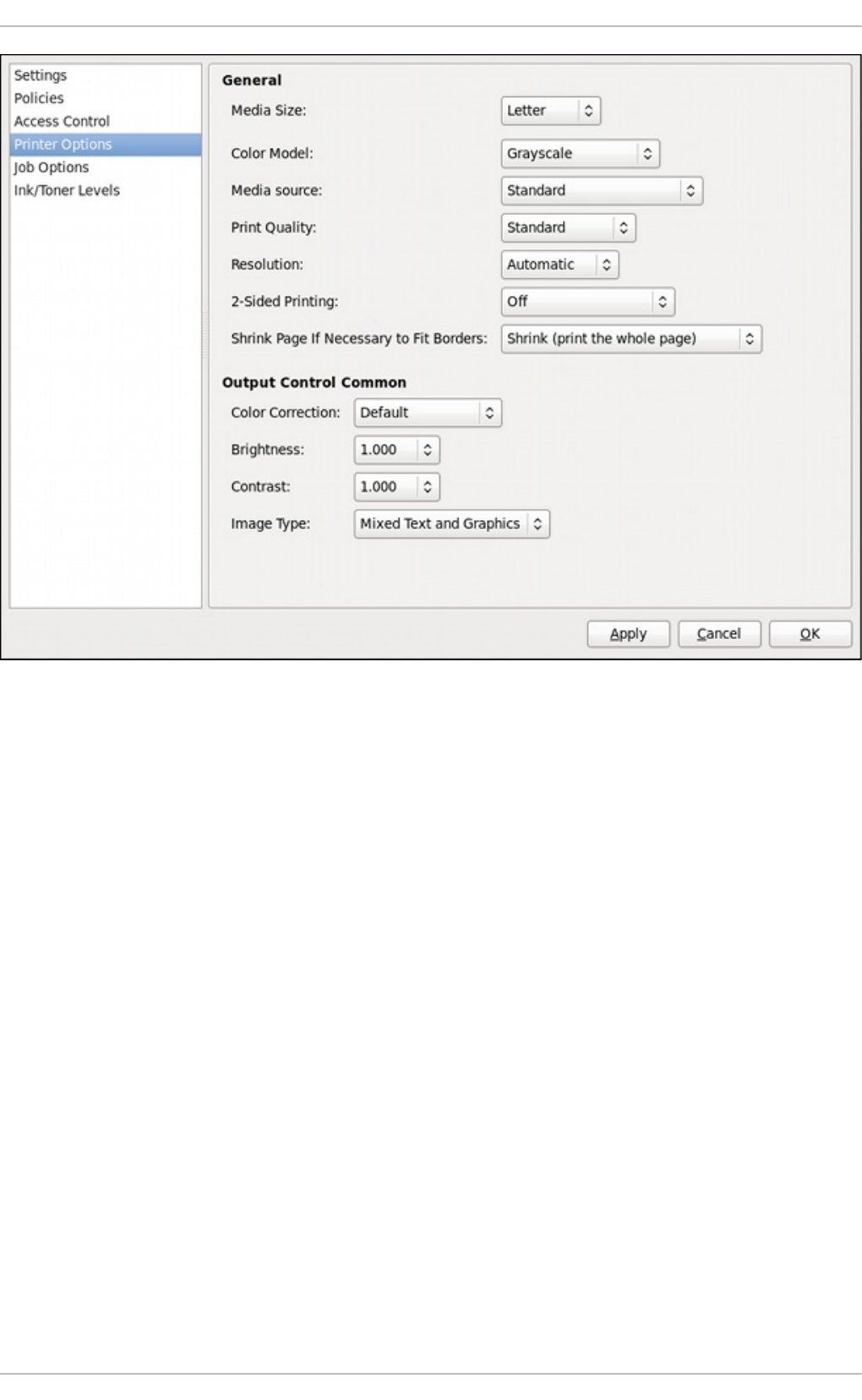

- 14.3.1. Starting the Print Settings Configuration Tool

- 14.3.2. Starting Printer Setup

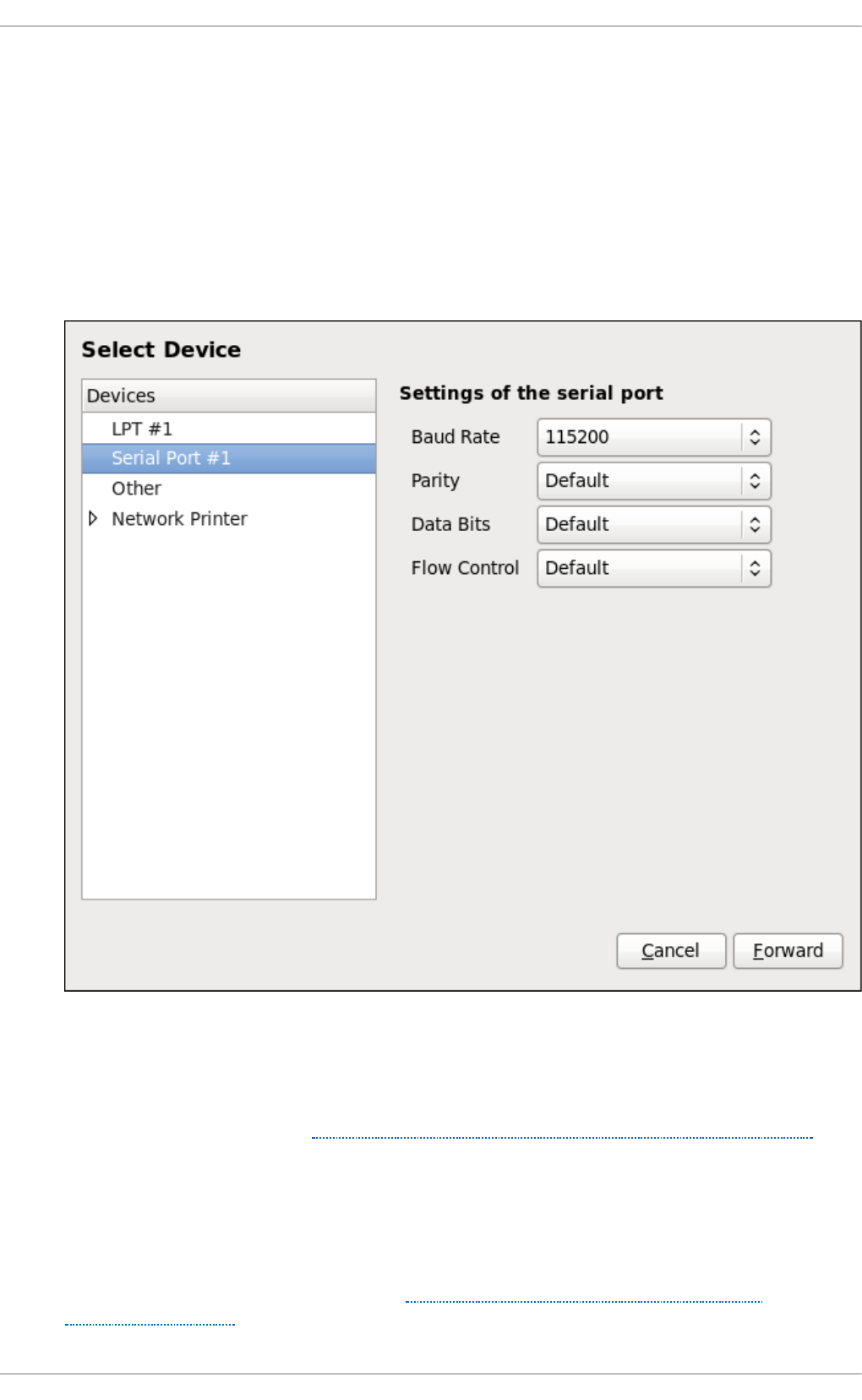

- 14.3.3. Adding a Local Printer

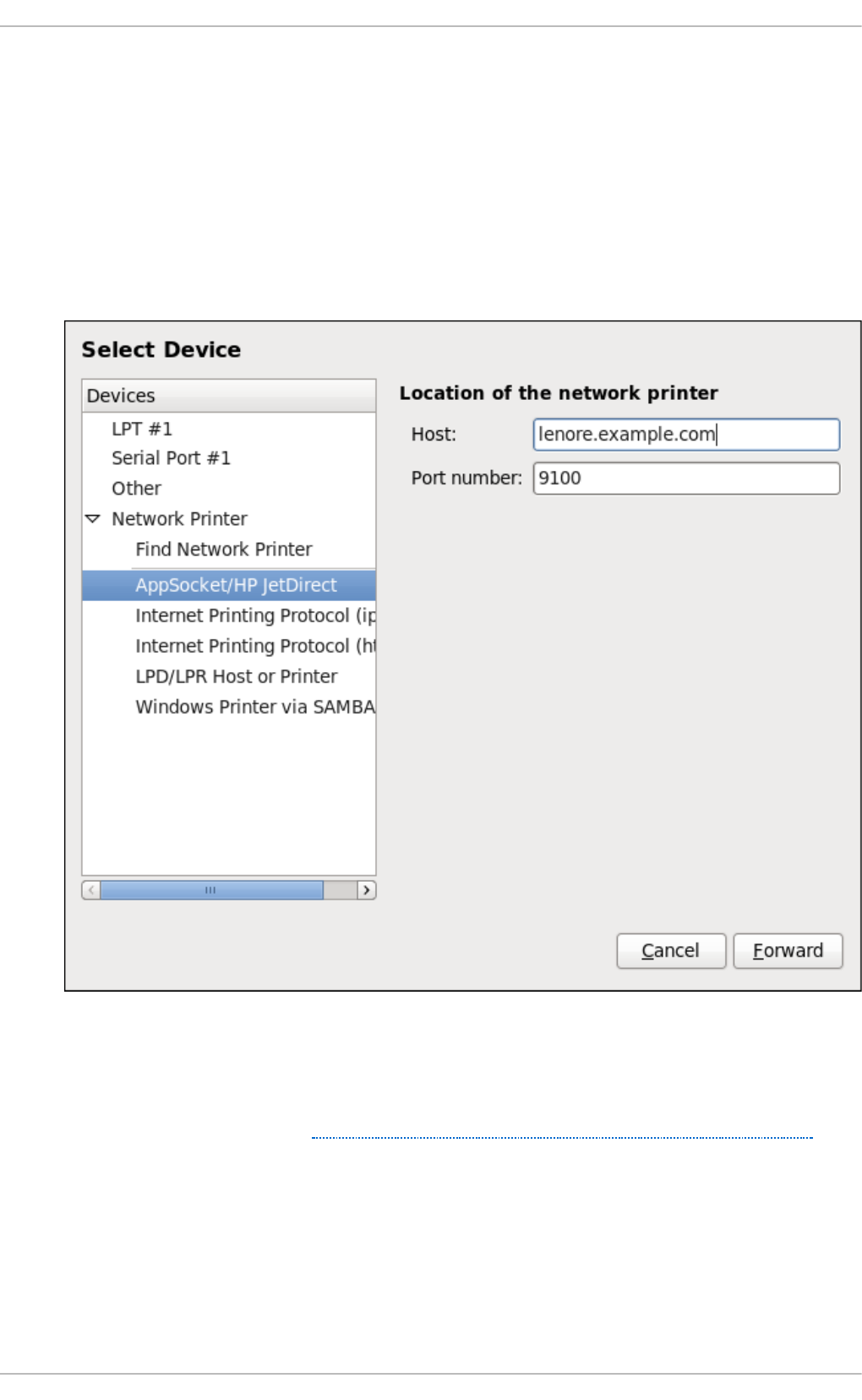

- 14.3.4. Adding an AppSocket/HP JetDirect printer

- 14.3.5. Adding an IPP Printer

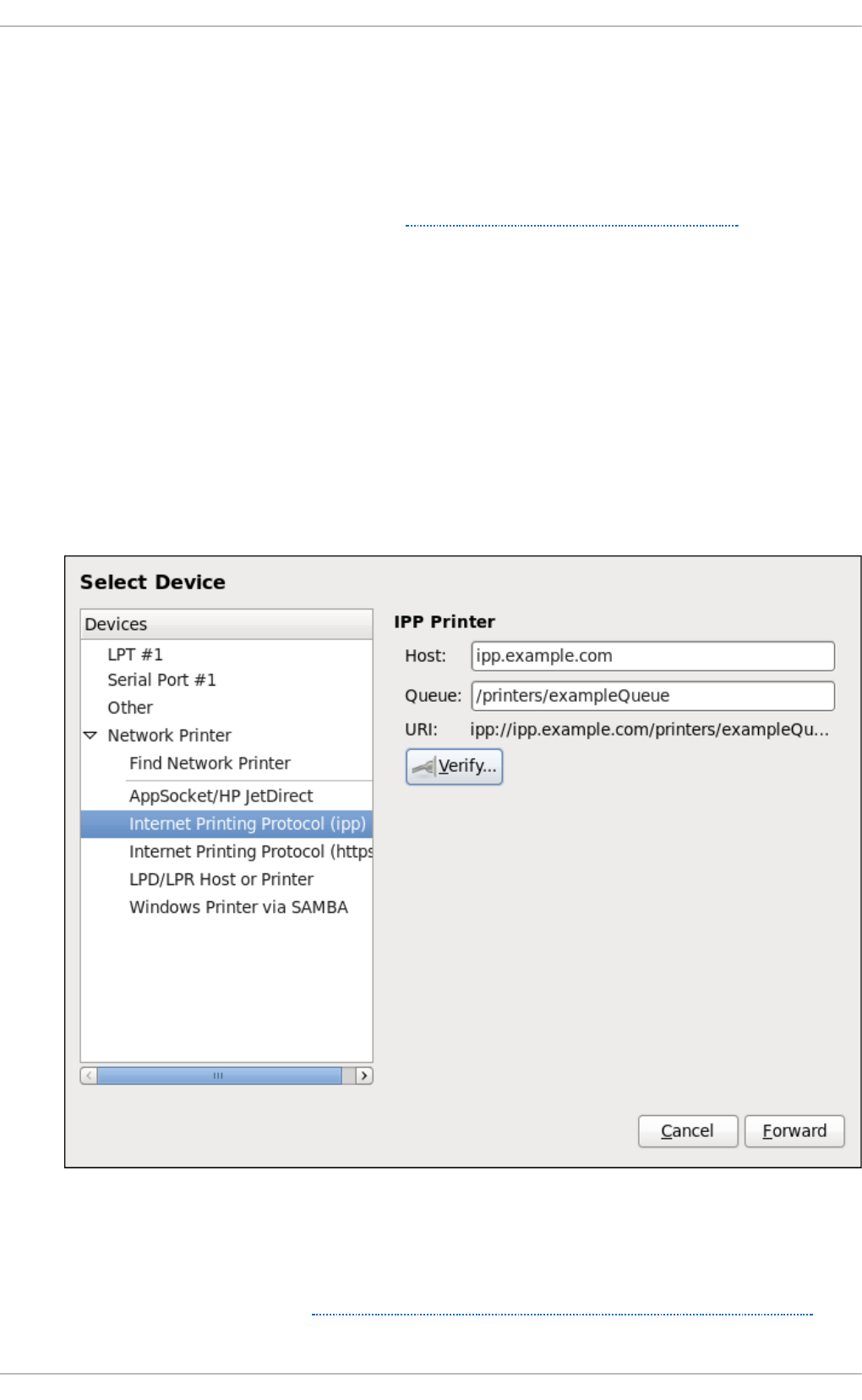

- 14.3.6. Adding an LPD/LPR Host or Printer

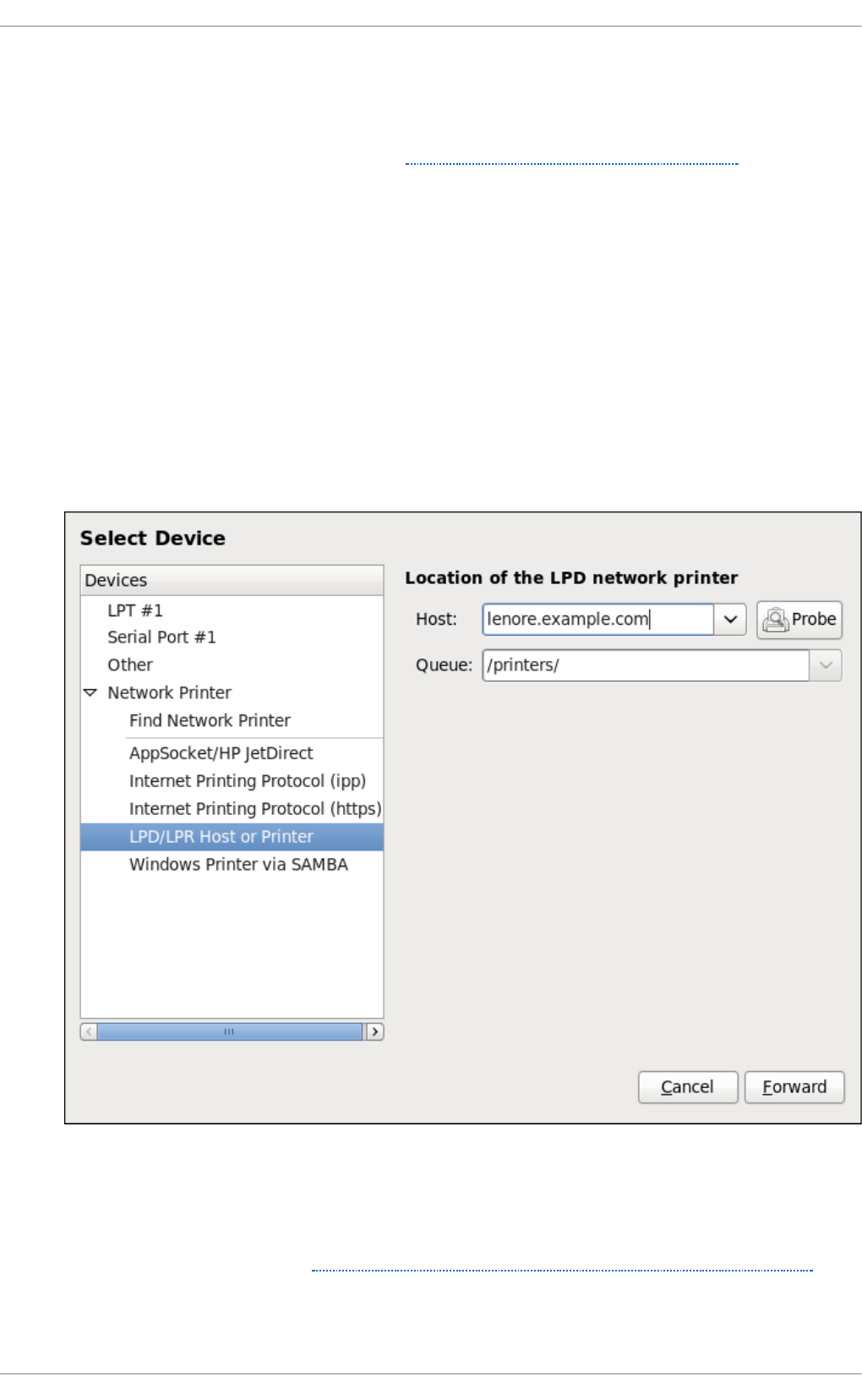

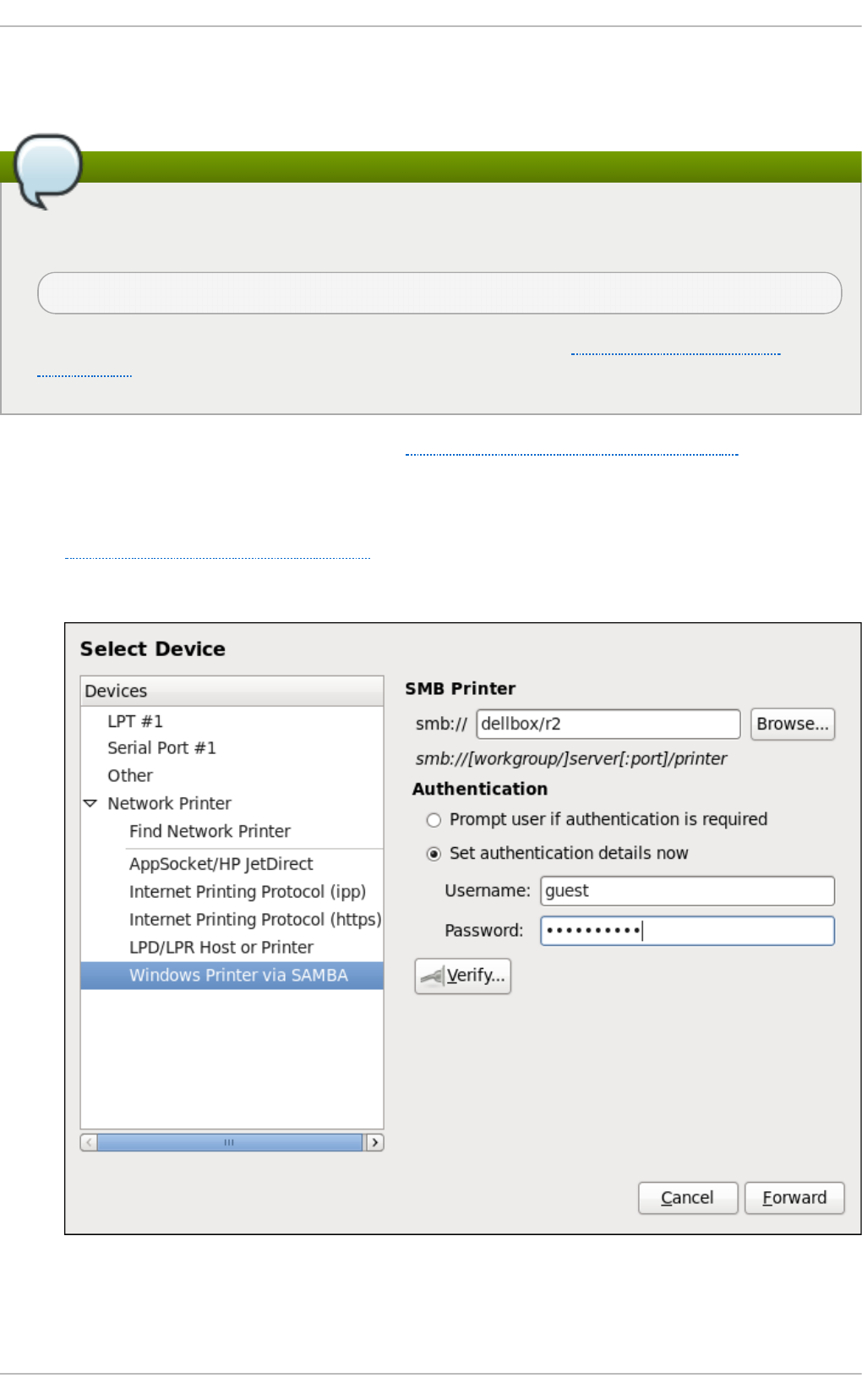

- 14.3.7. Adding a Samba (SMB) printer

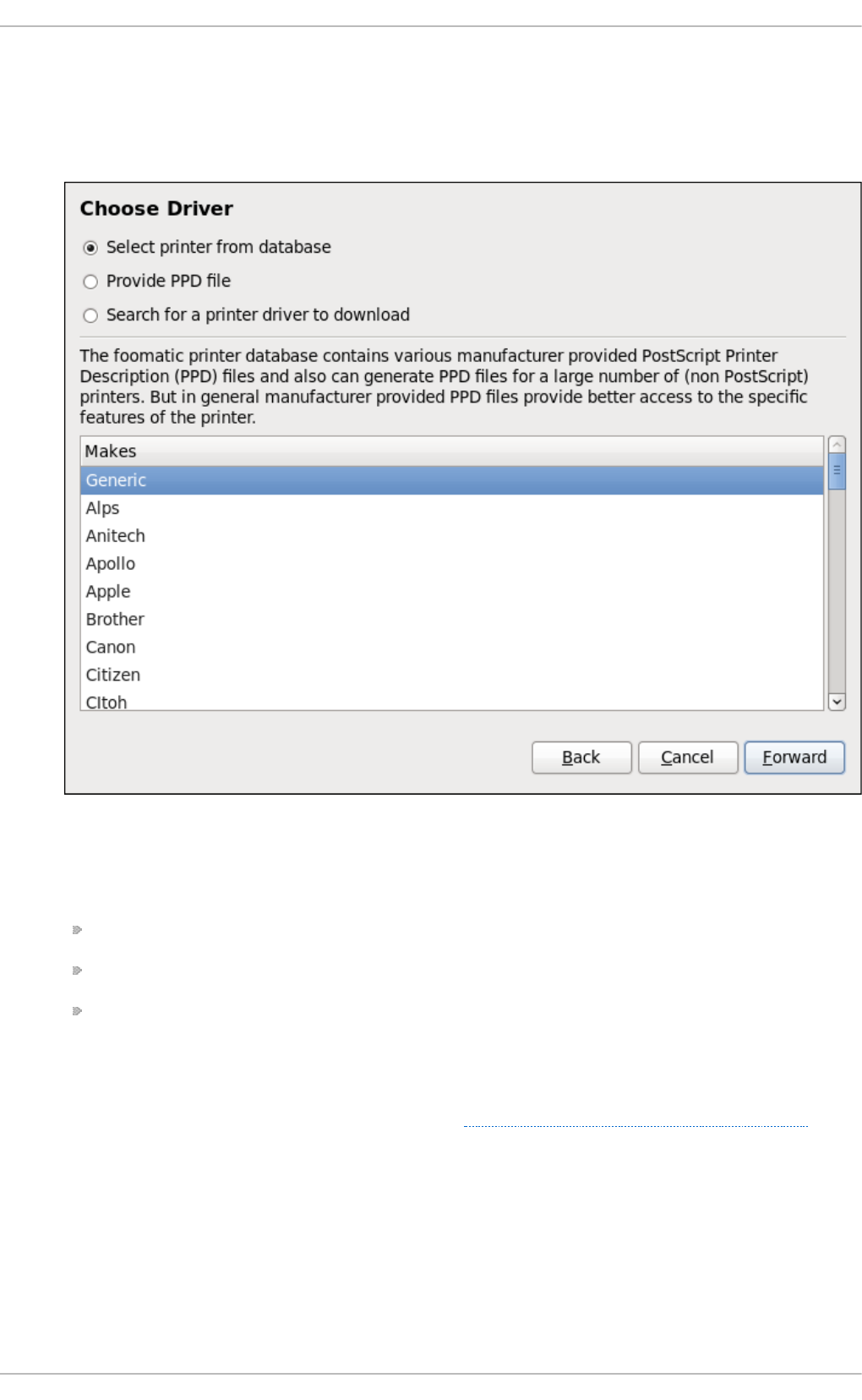

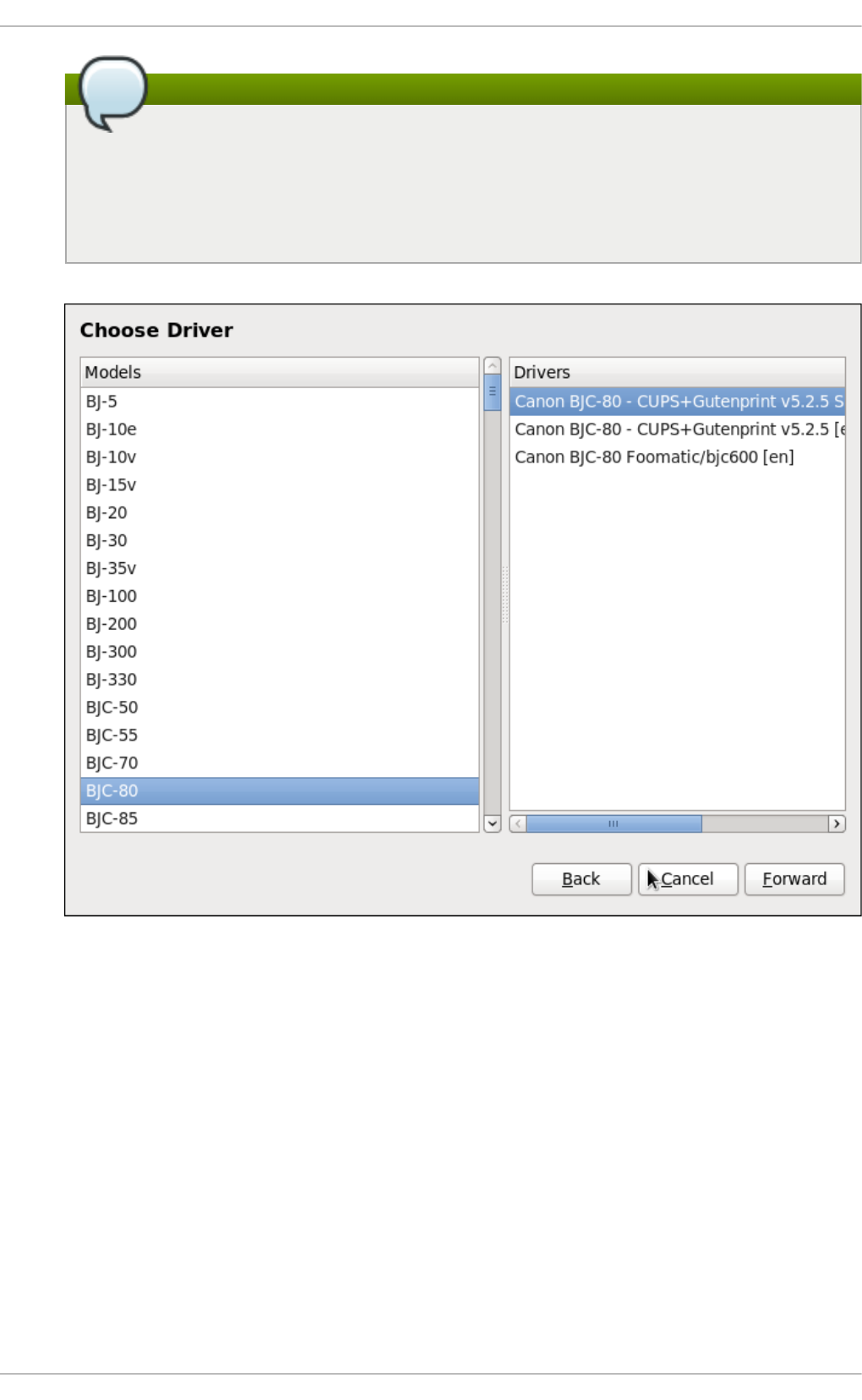

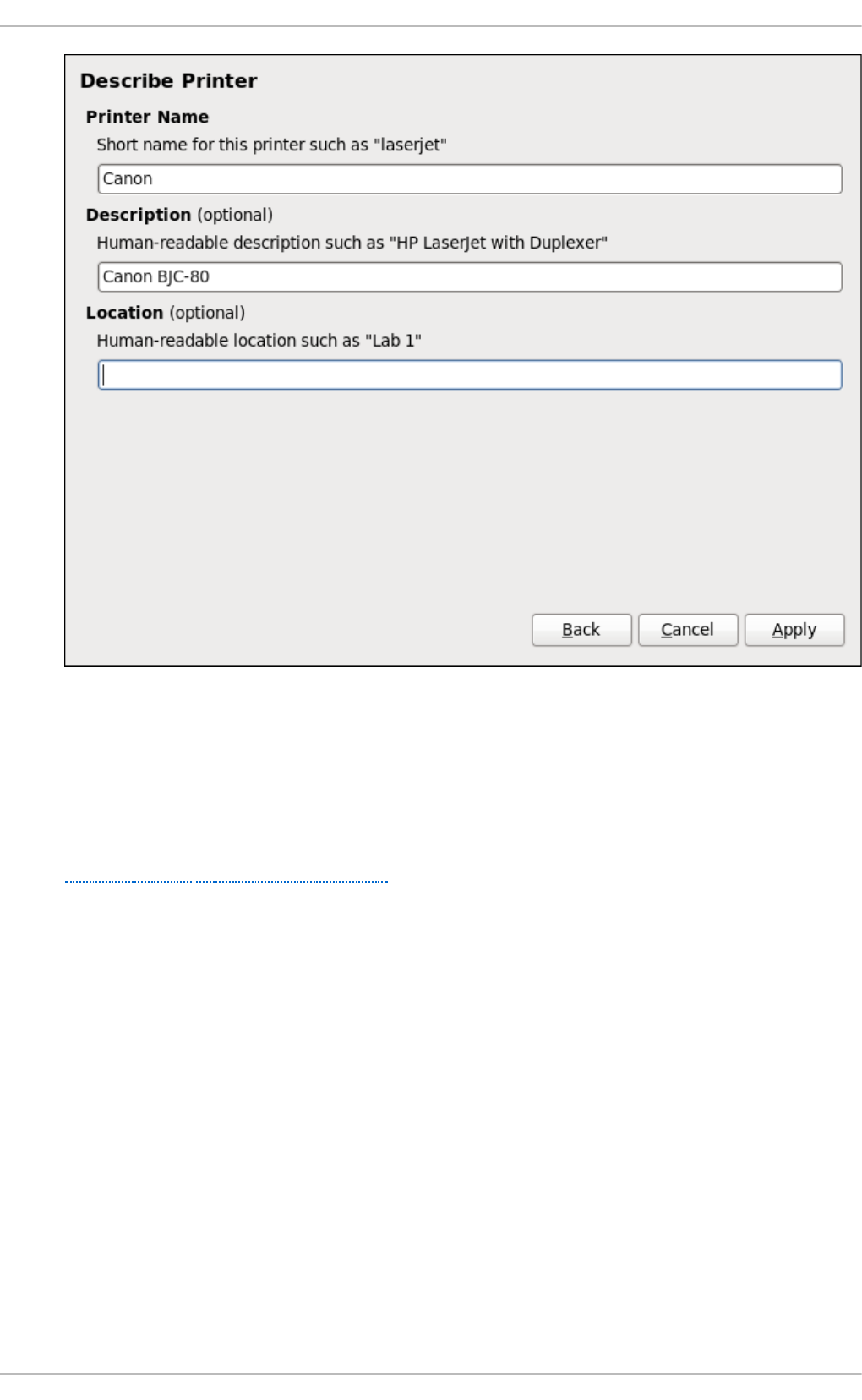

- 14.3.8. Selecting the Printer Model and Finishing

- 14.3.9. Printing a Test Page

- 14.3.10. Modifying Existing Printers

- 14.3.11. Additional Resources

- Installed Documentation

- Online Documentation

- 14.1. Samba

- Chapter 15. Configuring NTP Using the chrony Suite

- 15.1. Introduction to the chrony Suite

- 15.2. Understanding chrony and Its Configuration

- 15.3. Using chrony

- 15.4. Setting Up chrony for Different Environments

- 15.5. Using chronyc

- 15.6. Additional Resources

- Chapter 16. Configuring NTP Using ntpd

- 16.1. Introduction to NTP

- 16.2. NTP Strata

- 16.3. Understanding NTP

- 16.4. Understanding the Drift File

- 16.5. UTC, Timezones, and DST

- 16.6. Authentication Options for NTP

- 16.7. Managing the Time on Virtual Machines

- 16.8. Understanding Leap Seconds

- 16.9. Understanding the ntpd Configuration File

- 16.10. Understanding the ntpd Sysconfig File

- 16.11. Disabling chrony

- 16.12. Checking if the NTP Daemon is Installed

- 16.13. Installing the NTP Daemon (ntpd)

- 16.14. Checking the Status of NTP

- 16.15. Configure the Firewall to Allow Incoming NTP Packets

- 16.16. Configure ntpdate Servers

- 16.17. Configure NTP

- 16.17.1. Configure Access Control to an NTP Service

- 16.17.2. Configure Rate Limiting Access to an NTP Service

- 16.17.3. Adding a Peer Address

- 16.17.4. Adding a Server Address

- 16.17.5. Adding a Broadcast or Multicast Server Address

- 16.17.6. Adding a Manycast Client Address

- 16.17.7. Adding a Broadcast Client Address

- 16.17.8. Adding a Manycast Server Address

- 16.17.9. Adding a Multicast Client Address

- 16.17.10. Configuring the Burst Option

- 16.17.11. Configuring the iburst Option

- 16.17.12. Configuring Symmetric Authentication Using a Key

- 16.17.13. Configuring the Poll Interval

- 16.17.14. Configuring Server Preference

- 16.17.15. Configuring the Time-to-Live for NTP Packets

- 16.17.16. Configuring the NTP Version to Use

- 16.18. Configuring the Hardware Clock Update

- 16.19. Configuring Clock Sources

- 16.20. Additional Resources

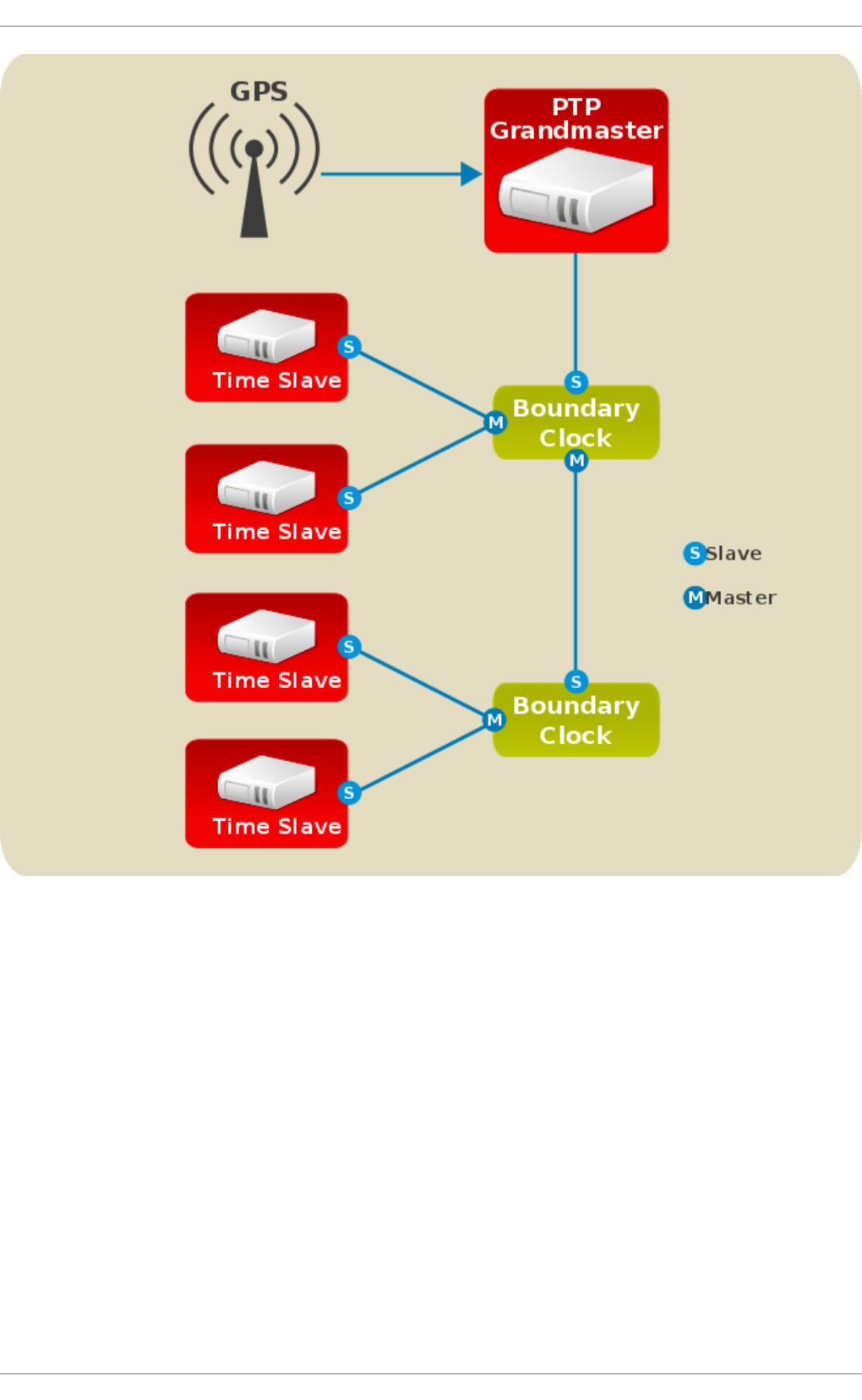

- Chapter 17. Configuring PTP Using ptp4l

- 17.1. Introduction to PTP

- 17.2. Using PTP

- 17.3. Using PTP with Multiple Interfaces

- 17.4. Specifying a Configuration File

- 17.5. Using the PTP Management Client

- 17.6. Synchronizing the Clocks

- 17.7. Verifying Time Synchronization

- 17.8. Serving PTP Time with NTP

- 17.9. Serving NTP Time with PTP

- 17.10. Synchronize to PTP or NTP Time Using timemaster

- 17.11. Improving Accuracy

- 17.12. Additional Resources

- Part VI. Monitoring and Automation

- Chapter 18. System Monitoring Tools

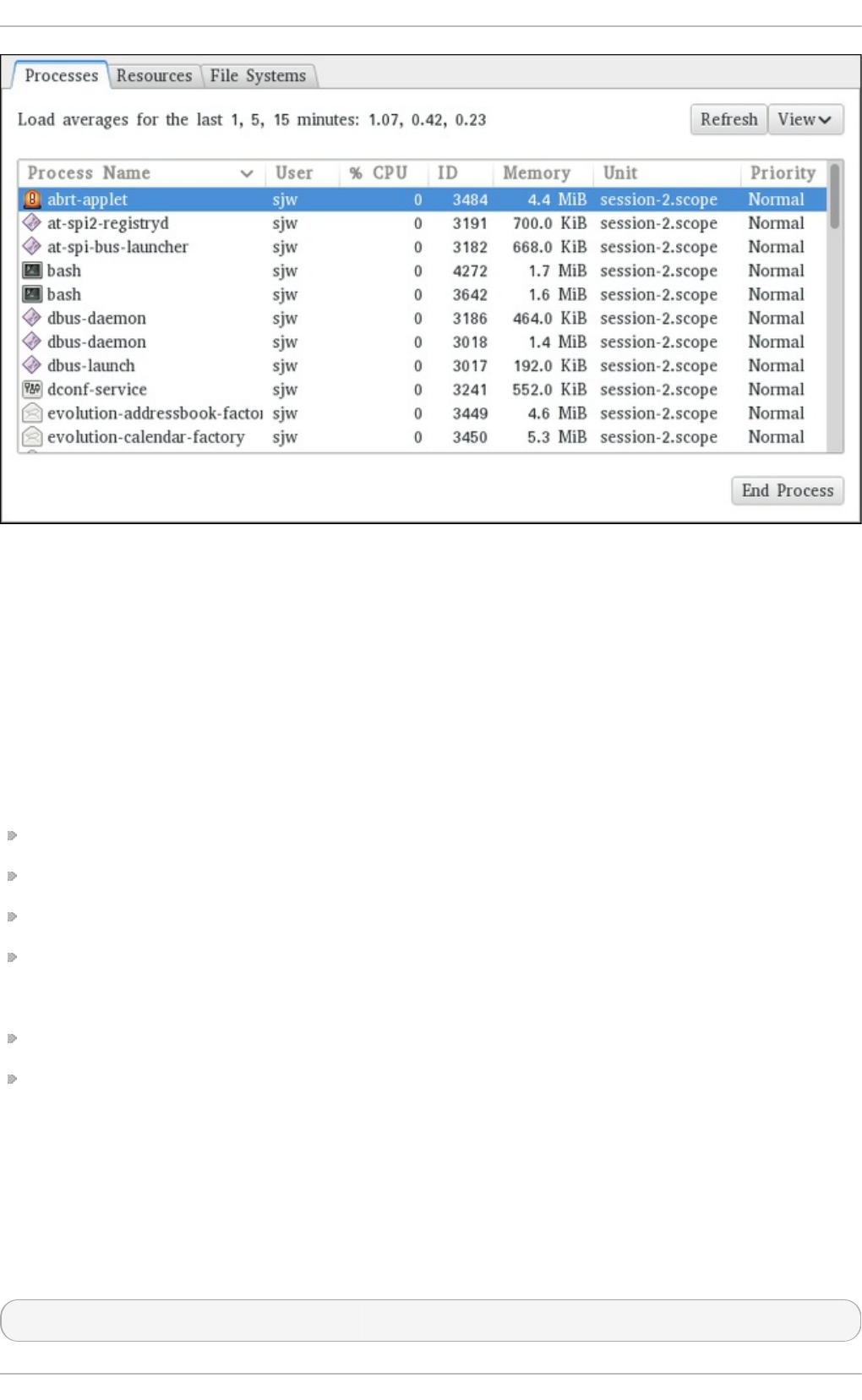

- 18.1. Viewing System Processes

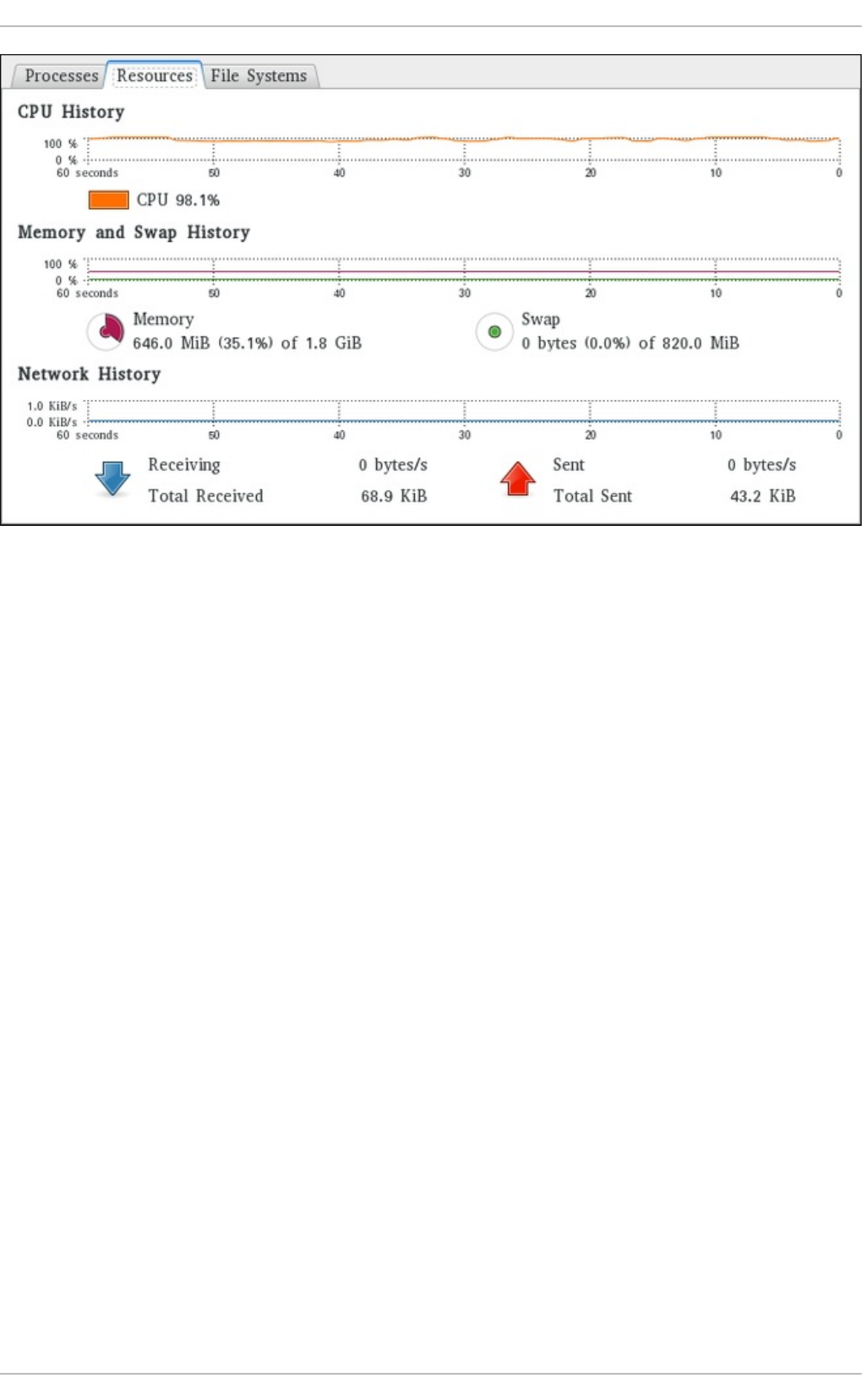

- 18.2. Viewing Memory Usage

- 18.3. Viewing CPU Usage

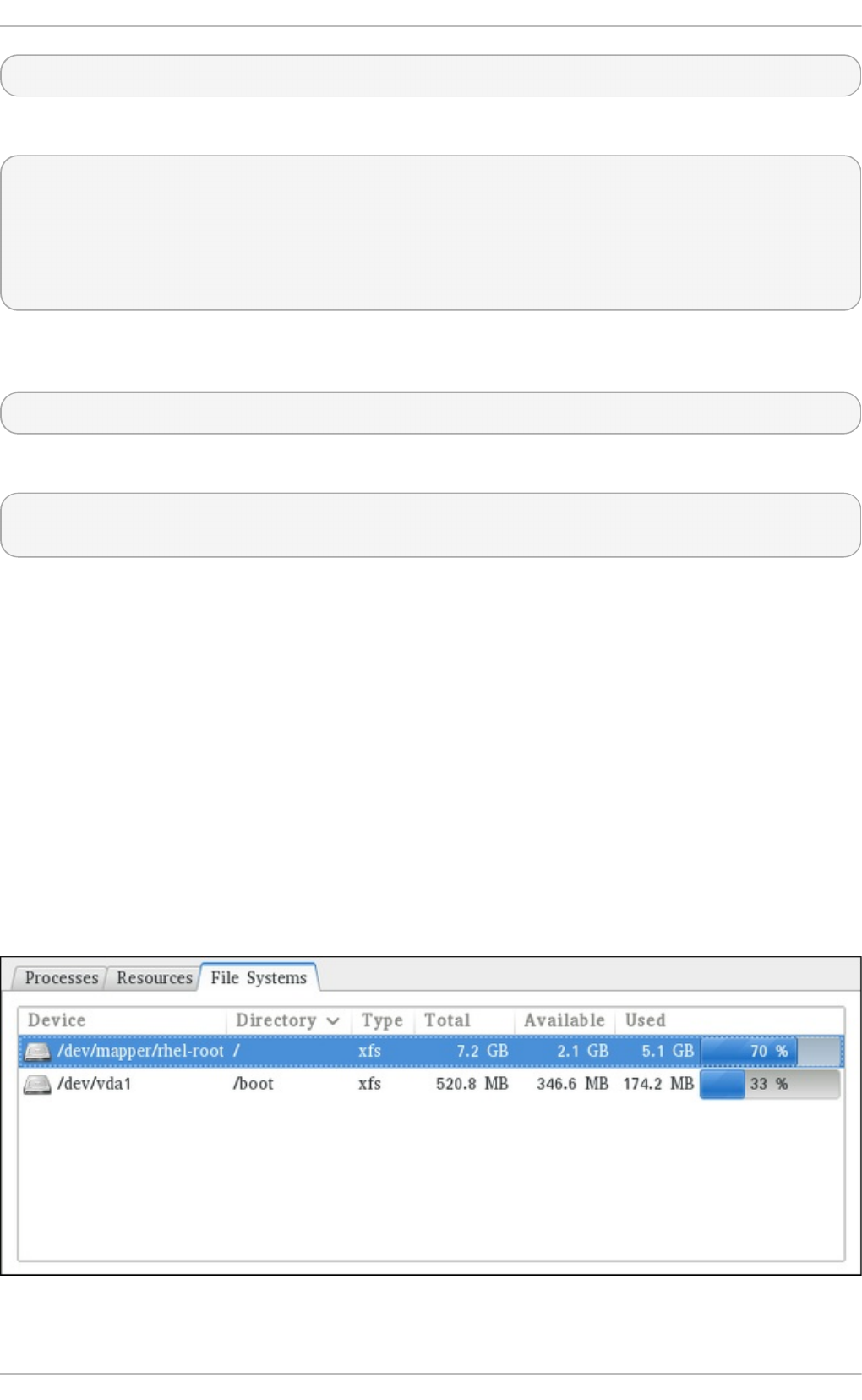

- 18.4. Viewing Block Devices and File Systems

- 18.5. Viewing Hardware Information

- 18.6. Checking for Hardware Errors

- 18.7. Monitoring Performance with Net-SNMP

- 18.8. Additional Resources

- Chapter 19. OpenLMI

- 19.1. About OpenLMI

- 19.2. Installing OpenLMI

- 19.3. Configuring SSL Certificates for OpenPegasus

- 19.4. Using LMIShell

- 19.4.1. Starting, Using, and Exiting LMIShell

- 19.4.2. Connecting to a CIMOM

- 19.4.3. Working with Namespaces

- 19.4.4. Working with Classes

- 19.4.5. Working with Instances

- 19.4.6. Working with Instance Names

- 19.4.7. Working with Associated Objects

- 19.4.8. Working with Association Objects

- 19.4.9. Working with Indications

- 19.4.10. Example Usage

- 19.5. Using OpenLMI Scripts

- 19.6. Additional Resources

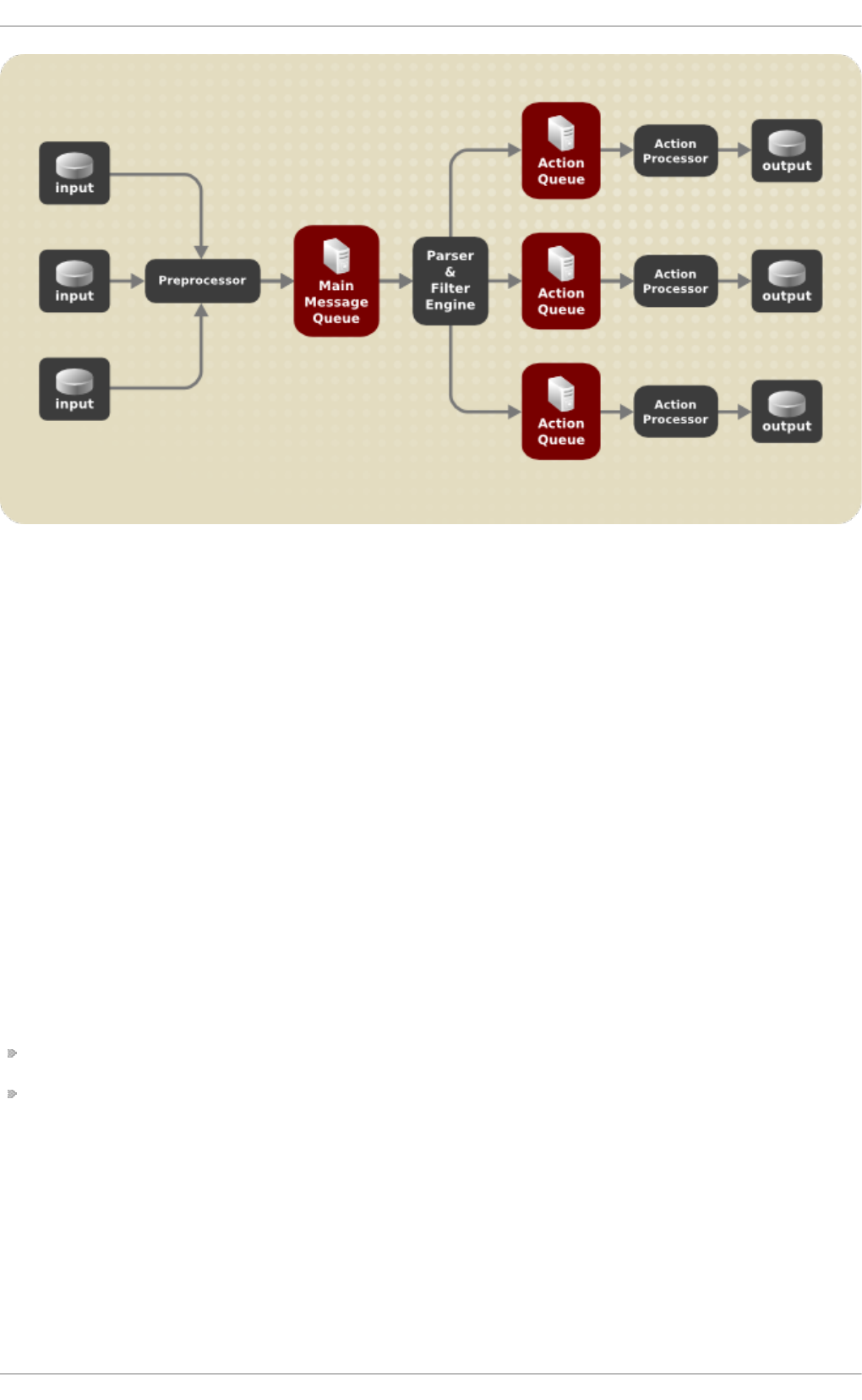

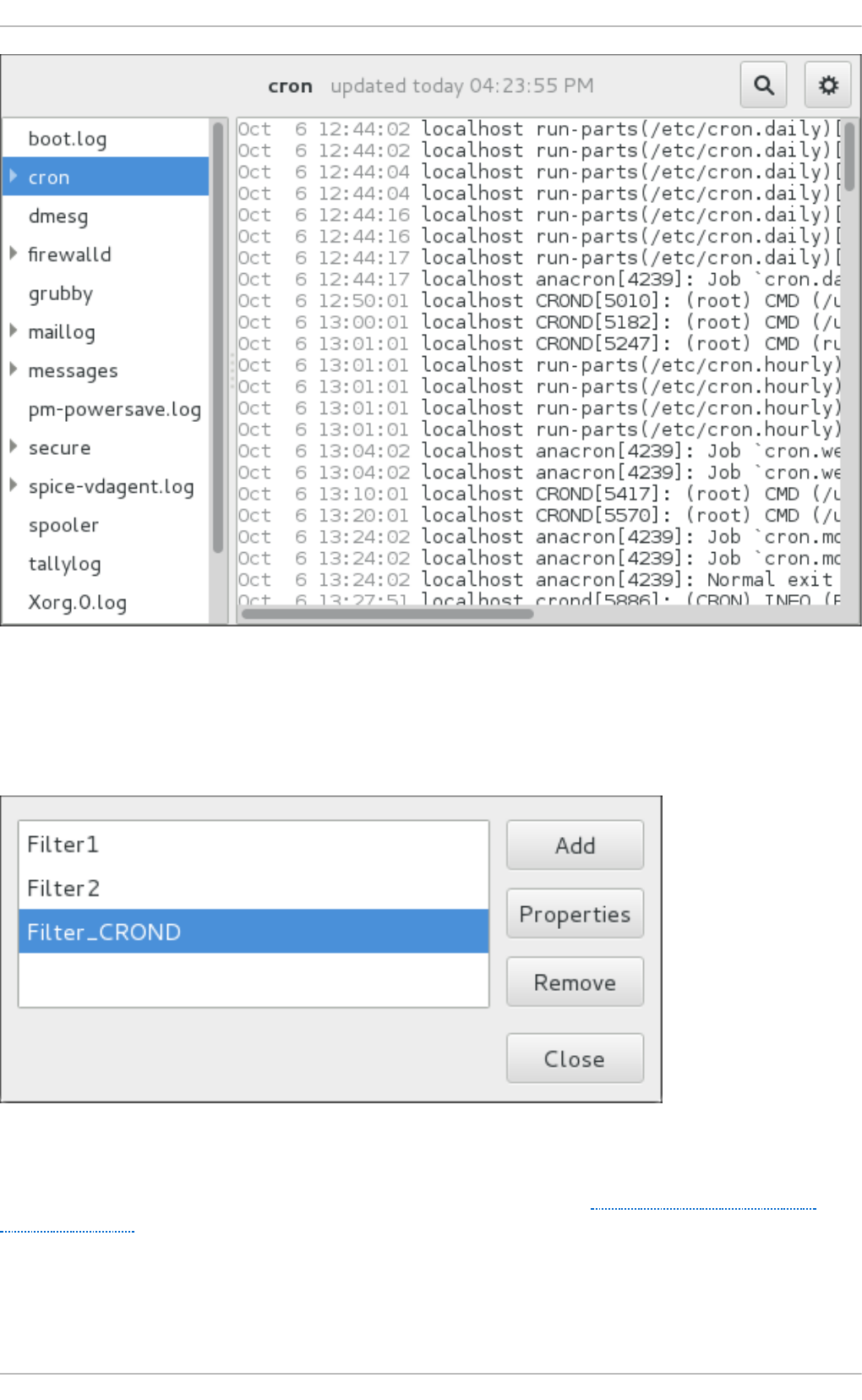

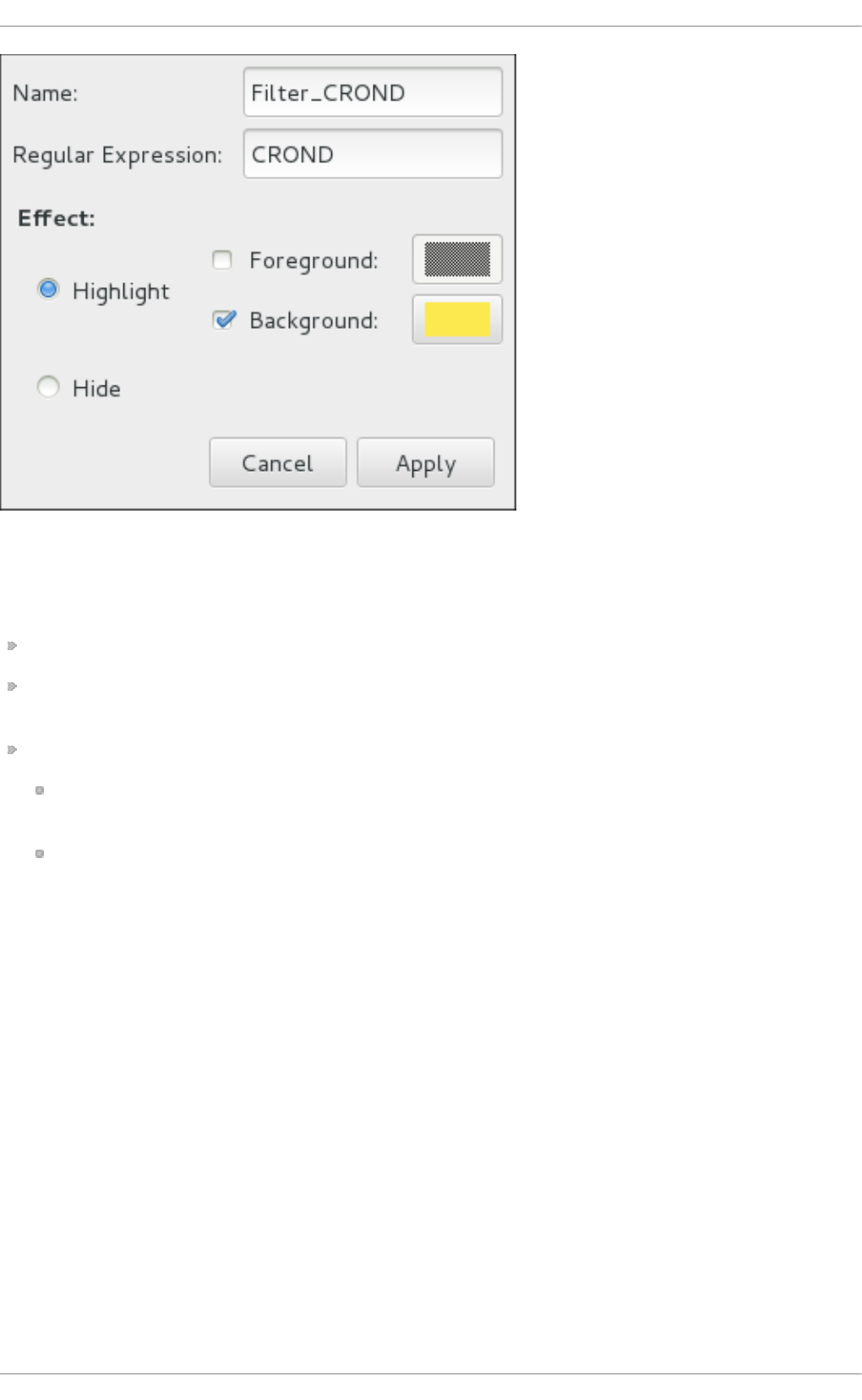

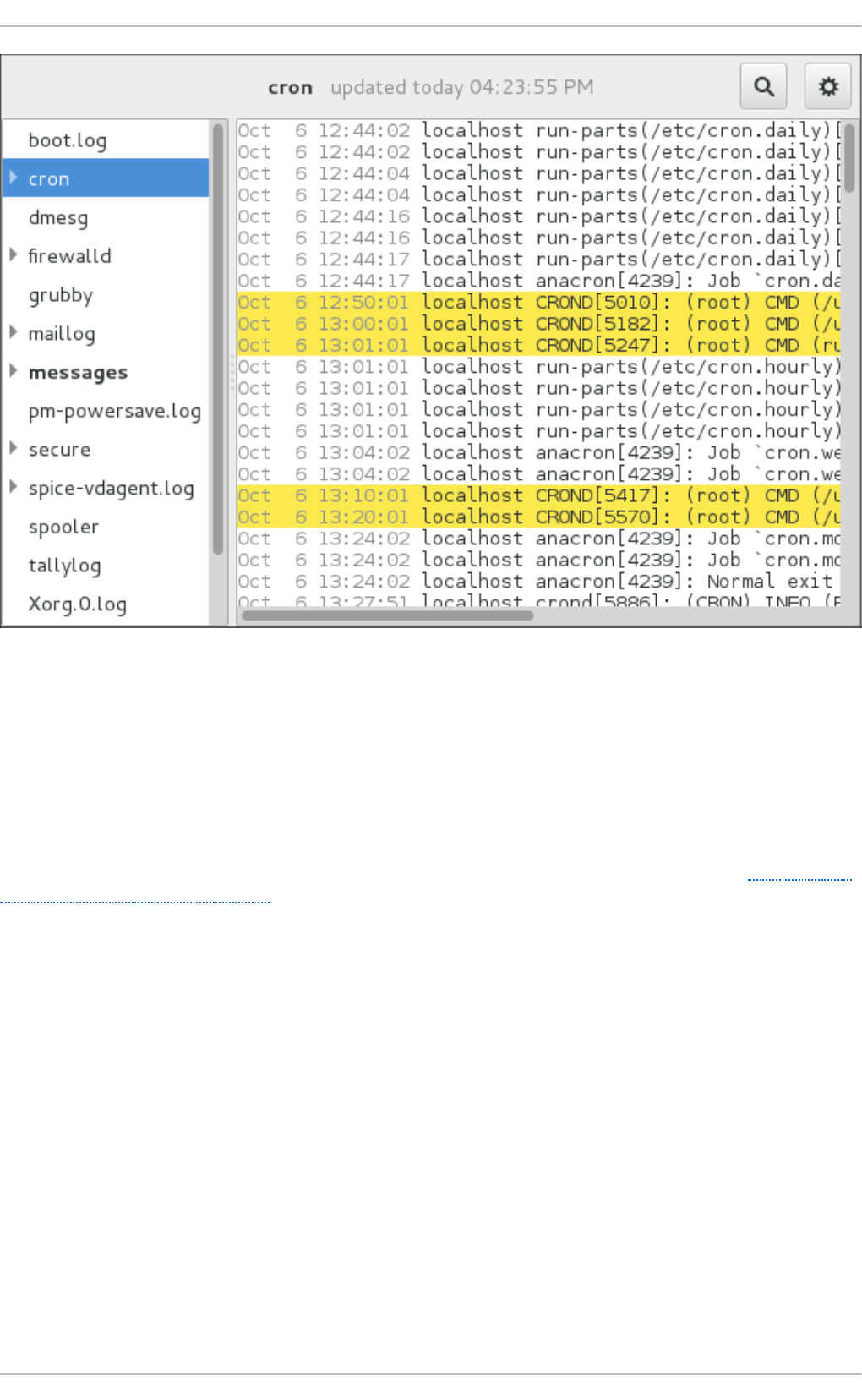

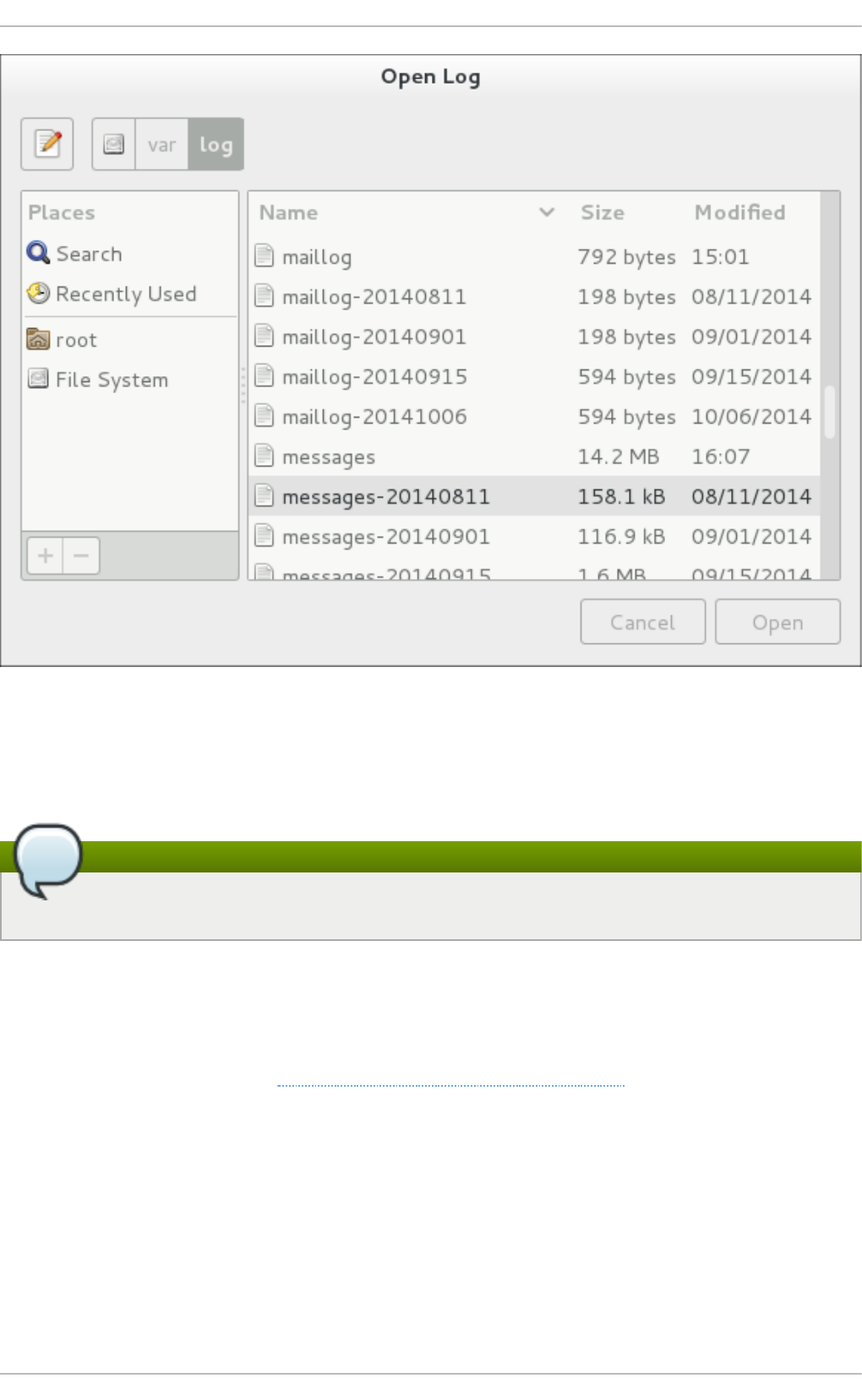

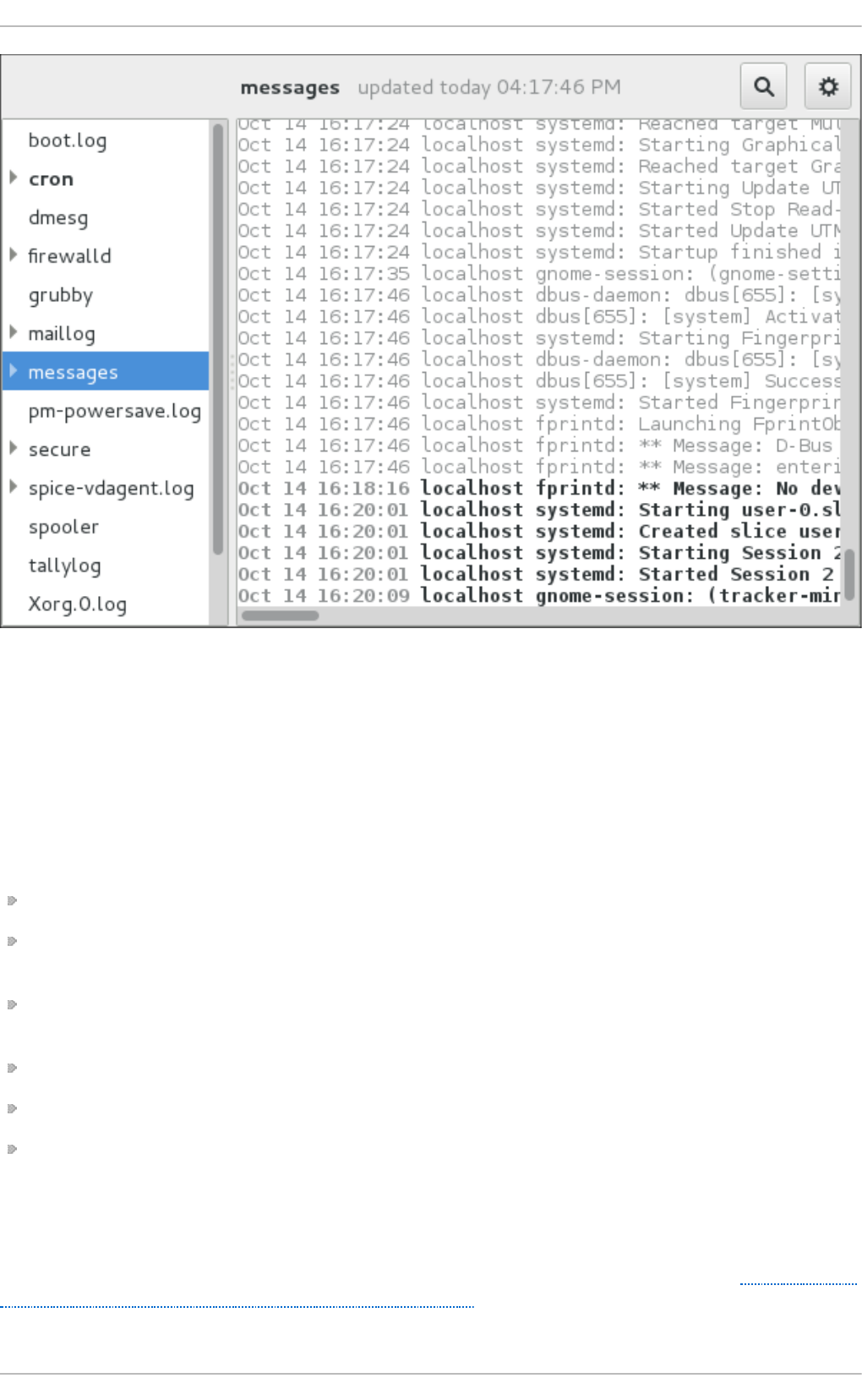

- Chapter 20. Viewing and Managing Log Files

- 20.1. Locating Log Files

- 20.2. Basic Configuration of Rsyslog

- 20.3. Using the New Configuration Format

- 20.4. Working with Queues in Rsyslog

- 20.5. Configuring rsyslog on a Logging Server

- 20.6. Using Rsyslog Modules

- 20.7. Interaction of Rsyslog and Journal

- 20.8. Structured Logging with Rsyslog

- 20.9. Debugging Rsyslog

- 20.10. Using the Journal

- 20.11. Managing Log Files in a Graphical Environment

- 20.12. Additional Resources

- Chapter 21. Automating System Tasks

- 21.1. Cron and Anacron

- 21.2. At and Batch

- 21.3. Scheduling a Job to Run on Next Boot Using a systemd Unit File

- 21.4. Additional Resources

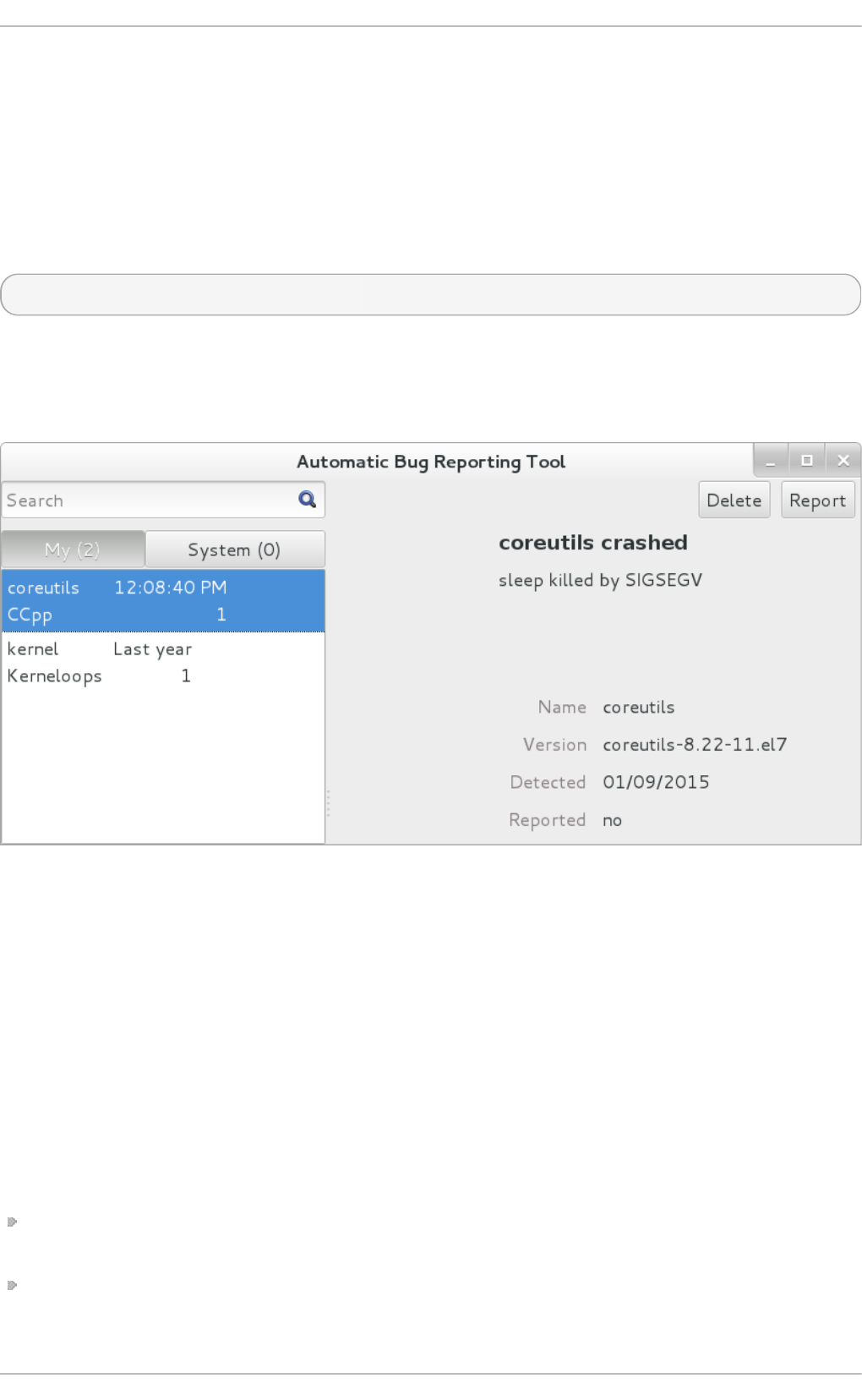

- Chapter 22. Automatic Bug Reporting Tool (ABRT)

- Chapter 23. OProfile

- 23.1. Overview of Tools

- 23.2. Using operf

- 23.3. Configuring OProfile Using Legacy Mode

- 23.4. Starting and Stopping OProfile Using Legacy Mode

- 23.5. Saving Data in Legacy Mode

- 23.6. Analyzing the Data

- 23.7. Understanding the /dev/oprofile/ directory

- 23.8. Example Usage

- 23.9. OProfile Support for Java

- 23.10. Graphical Interface

- 23.11. OProfile and SystemTap

- 23.12. Additional Resources

- Part VII. Kernel, Module and Driver Configuration

- Chapter 24. Working with the GRUB 2 Boot Loader

- 24.1. Introduction to GRUB 2

- 24.2. Configuring the GRUB 2 Boot Loader

- 24.3. Making Temporary Changes to a GRUB 2 Menu

- 24.4. Making Persistent Changes to a GRUB 2 Menu Using the grubby Tool

- 24.5. Customizing the GRUB 2 Configuration File

- 24.6. Protecting GRUB 2 with a Password

- 24.7. Reinstalling GRUB 2

- 24.8. GRUB 2 over a Serial Console

- 24.9. Terminal Menu Editing During Boot

- 24.10. Unified Extensible Firmware Interface (UEFI) Secure Boot

- 24.11. Additional Resources

- Chapter 25. Manually Upgrading the Kernel

- Chapter 26. Working with Kernel Modules

- 26.1. Listing Currently-Loaded Modules

- 26.2. Displaying Information About a Module

- 26.3. Loading a Module

- 26.4. Unloading a Module

- 26.5. Setting Module Parameters

- 26.6. Persistent Module Loading

- 26.7. Installing Modules from a Driver Update Disk

- 26.8. Signing Kernel Modules for Secure Boot

- 26.9. Additional Resources

- Part VIII. System Backup and Recovery

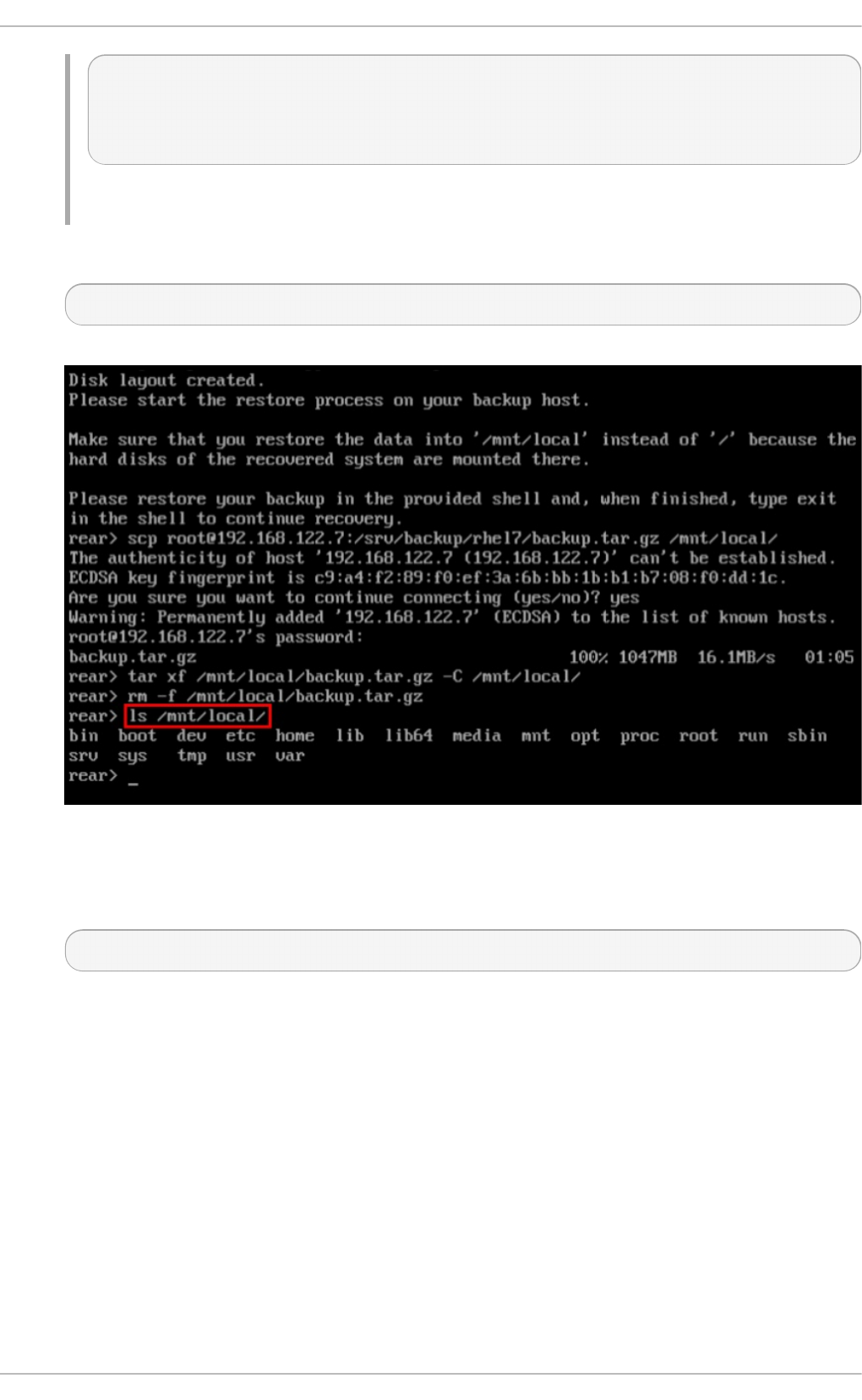

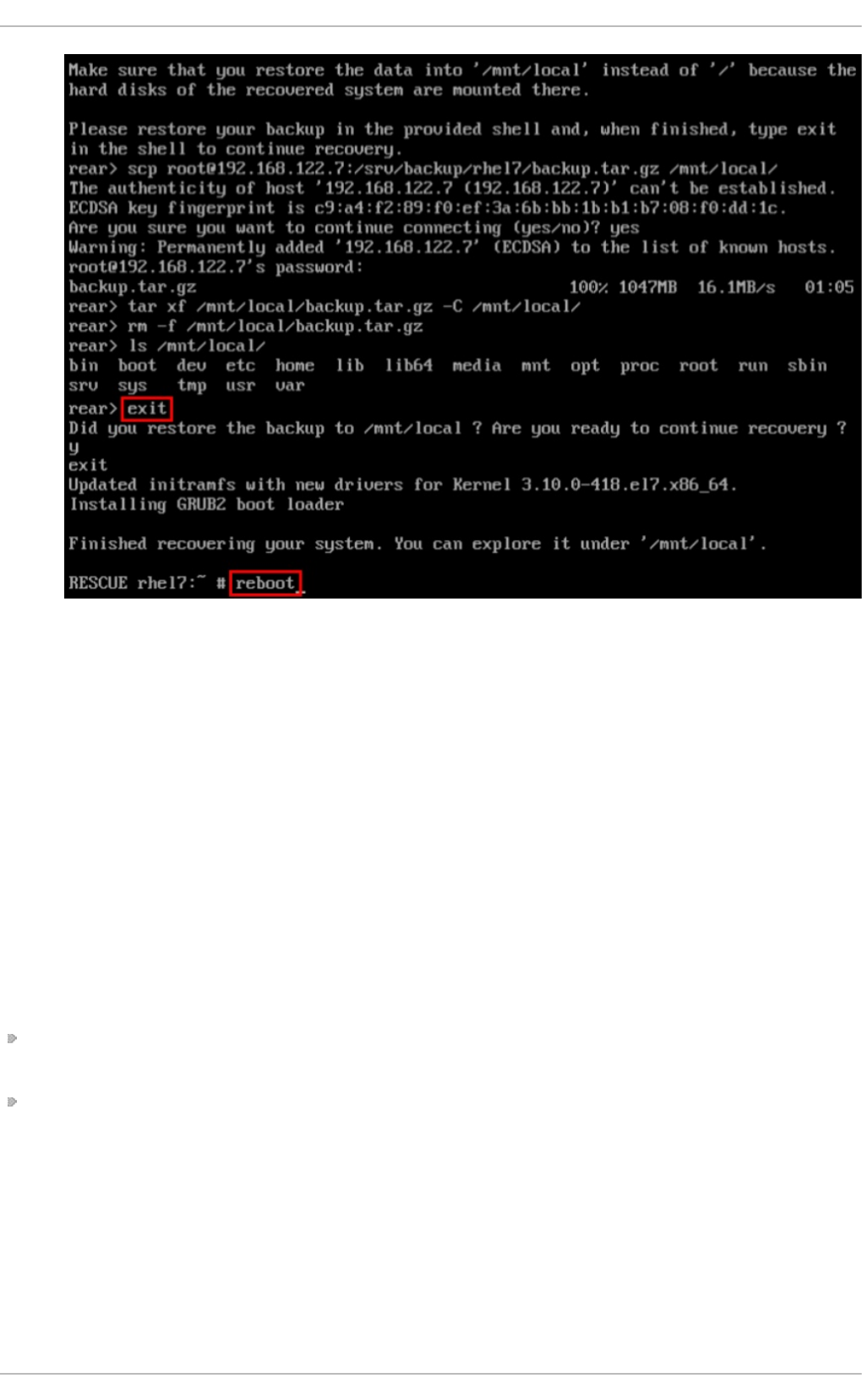

- Chapter 27. Relax-and-Recover (ReaR)

- Appendix A. RPM

- Appendix B. Revision History

- Index

Maxim Svistunov Marie Doleželová Stephen Wadeley

Tomáš Čapek Jaromír Hradílek Douglas Silas

Jana Heves Petr Kovář Peter Ondrejka

Petr Bokoč Martin Prpič Eliška Slobodová

Eva Kopalová Miroslav Svoboda David O'Brien

Michael Hideo Don Domingo John Ha

Red Hat Enterprise Linux 7

System Administrator's Guide

Deployment, Configuration, and Administration of Red Hat Enterprise Linux

7

Red Hat Enterprise Linux 7 System Administrator's Guide

Deployment, Configuration, and Administration of Red Hat Enterprise Linux

7

Maxim Svistunov

Red Hat Customer Content Services

msvistun@redhat.com

Marie Doleželová

Red Hat Customer Content Services

mdolezel@redhat.com

Stephen Wadeley

Red Hat Customer Content Services

swadeley@redhat.com

Tomáš Čapek

Red Hat Customer Content Services

tcapek@redhat.com

Jaromír Hradílek

Red Hat Customer Content Services

Douglas Silas

Red Hat Customer Content Services

Jana Heves

Red Hat Customer Content Services

Petr Kovář

Red Hat Customer Content Services

Peter Ondrejka

Red Hat Customer Content Services

Petr Bokoč

Red Hat Customer Content Services

Martin Prpič

Red Hat Product Security

Eliška Slobo dová

Red Hat Customer Content Services

Eva Kopalová

Red Hat Customer Content Services

Miroslav Svoboda

Red Hat Customer Content Services

David O'Brien

Red Hat Customer Content Services

Michael Hideo

Red Hat Customer Content Services

Don Domingo

Red Hat Customer Content Services

John Ha

Red Hat Customer Content Services

Legal Notice

Copyright © 2016 Red Hat, Inc.

This document is licensed by Red Hat under the Creative Co mmo ns Attribution-ShareAlike 3.0

Unported License. If you distribute this document, or a modified version of it, you must provide

attribution to Red Hat, Inc. and provide a link to the original. If the document is modified, all Red

Hat trademarks must be removed.

Red Hat, as the licensor of this document, waives the right to enforce, and agrees not to assert,

Section 4d of CC-BY-SA to the fullest extent permitted by applicable law.

Red Hat, Red Hat Enterprise Linux, the Shadowman logo, JBoss, OpenShift, Fedora, the Infinity

logo, and RHCE are trademarks of Red Hat, Inc., registered in the United States and other

countries.

Linux ® is the registered trademark of Linus Torvalds in the United States and other countries.

Java ® is a registered trademark of Oracle and/or its affiliates.

XFS ® is a trademark of Silicon Graphics International Corp. or its subsidiaries in the United

States and/or other countries.

MySQL ® is a registered trademark of MySQL AB in the United States, the European Union and

other co untries.

Node.js ® is an official trademark of Joyent. Red Hat Software Collections is not formally

related to or endorsed by the official Joyent Node.js open source or commercial project.

The OpenStack ® Word Mark and OpenStack logo are either registered trademarks/service

marks or trademarks/service marks of the OpenStack Foundation, in the United States and other

countries and are used with the OpenStack Foundation's permission. We are not affiliated with,

endorsed or sponsored by the OpenStack Foundation, or the OpenStack community.

All other trademarks are the pro perty of their respective owners.

Abstract

The System Administrator's Guide documents relevant information regarding the deployment,

configuration, and administration of Red Hat Enterprise Linux 7. It is oriented towards system

administrators with a basic understanding of the system. To expand your expertise, you might

also be interested in the Red Hat System Administration I (RH124), Red Hat System

Administration II (RH134), Red Hat System Administration III (RH254), or RHCSA Rapid Track

(RH199) training courses.

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

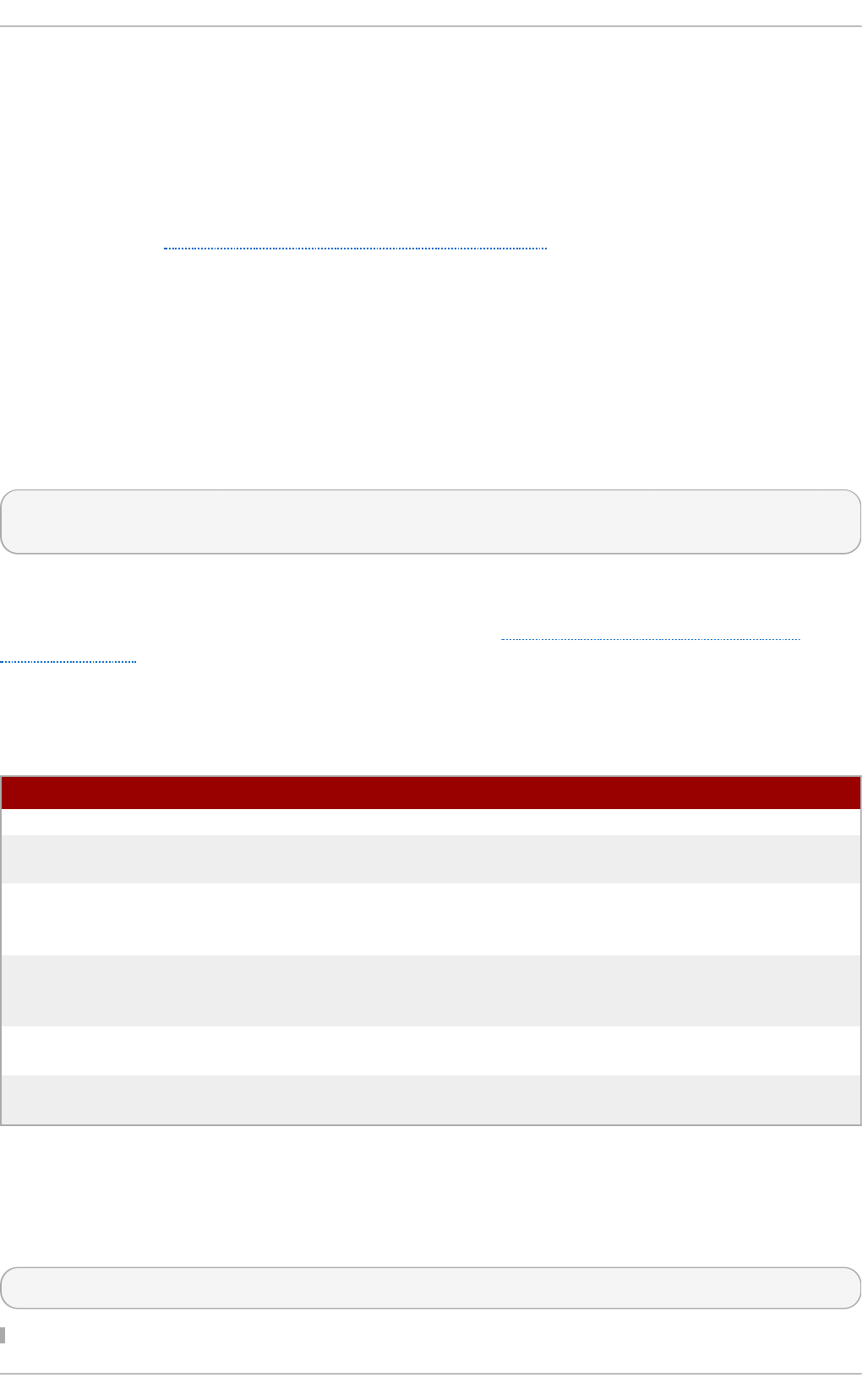

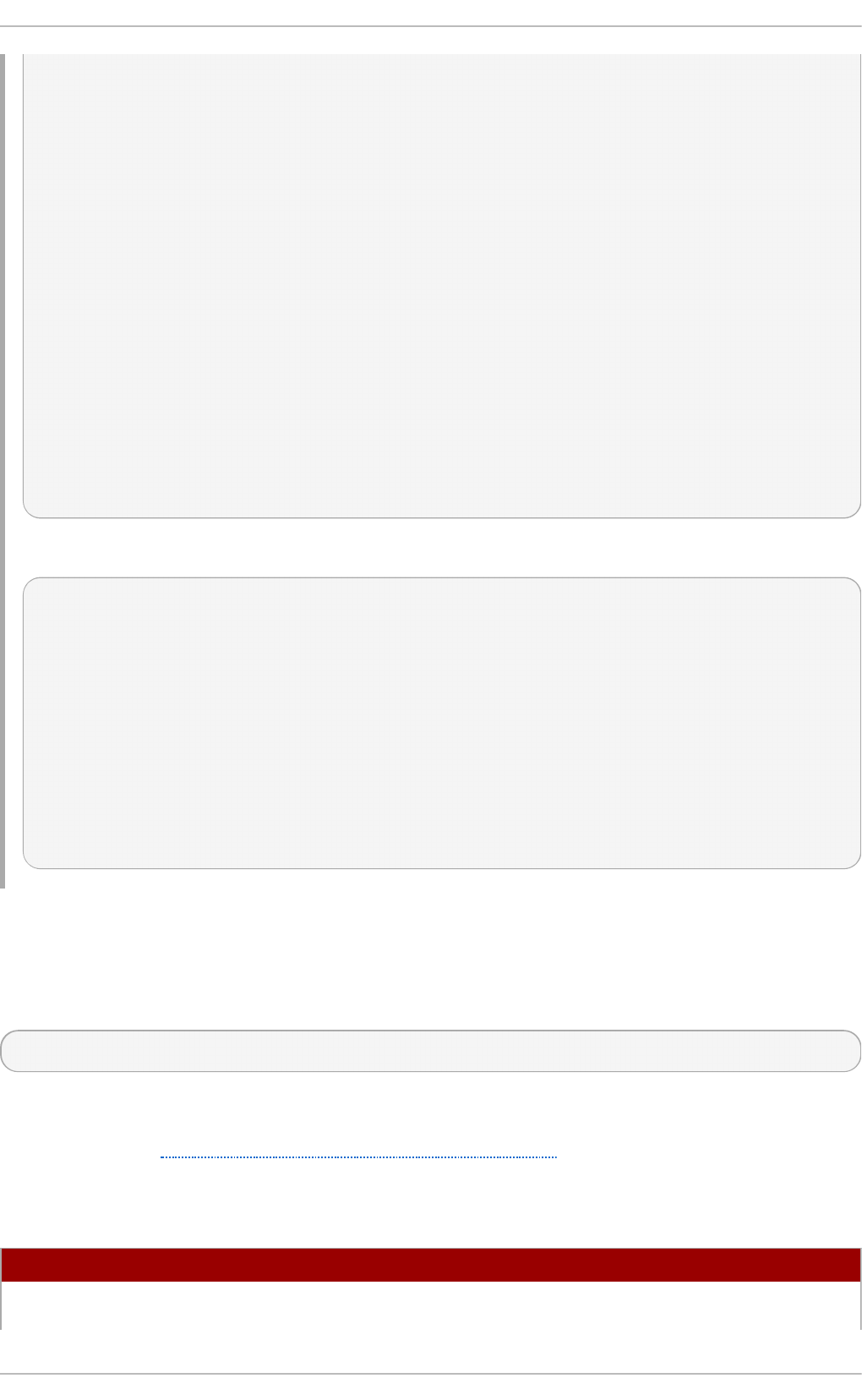

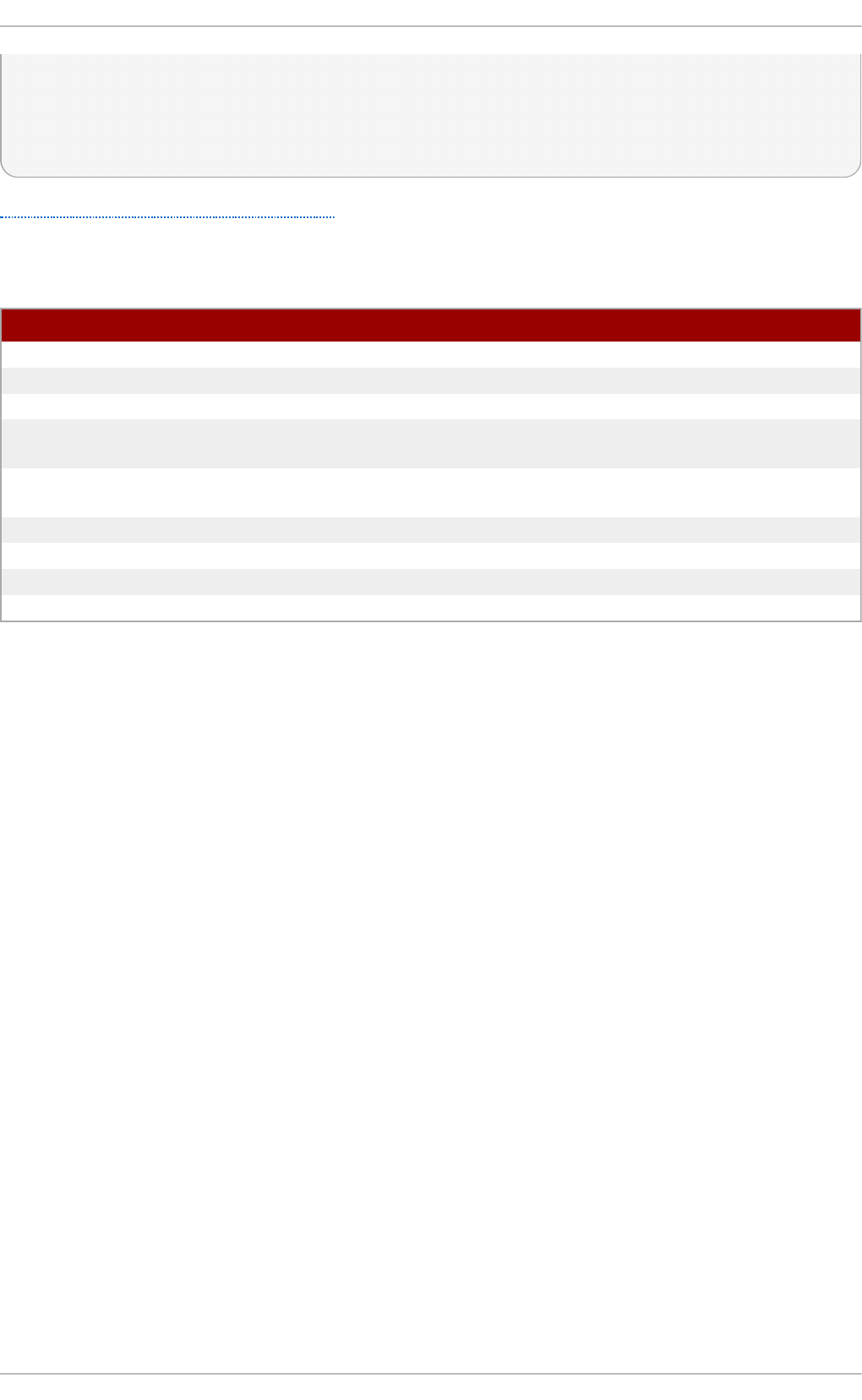

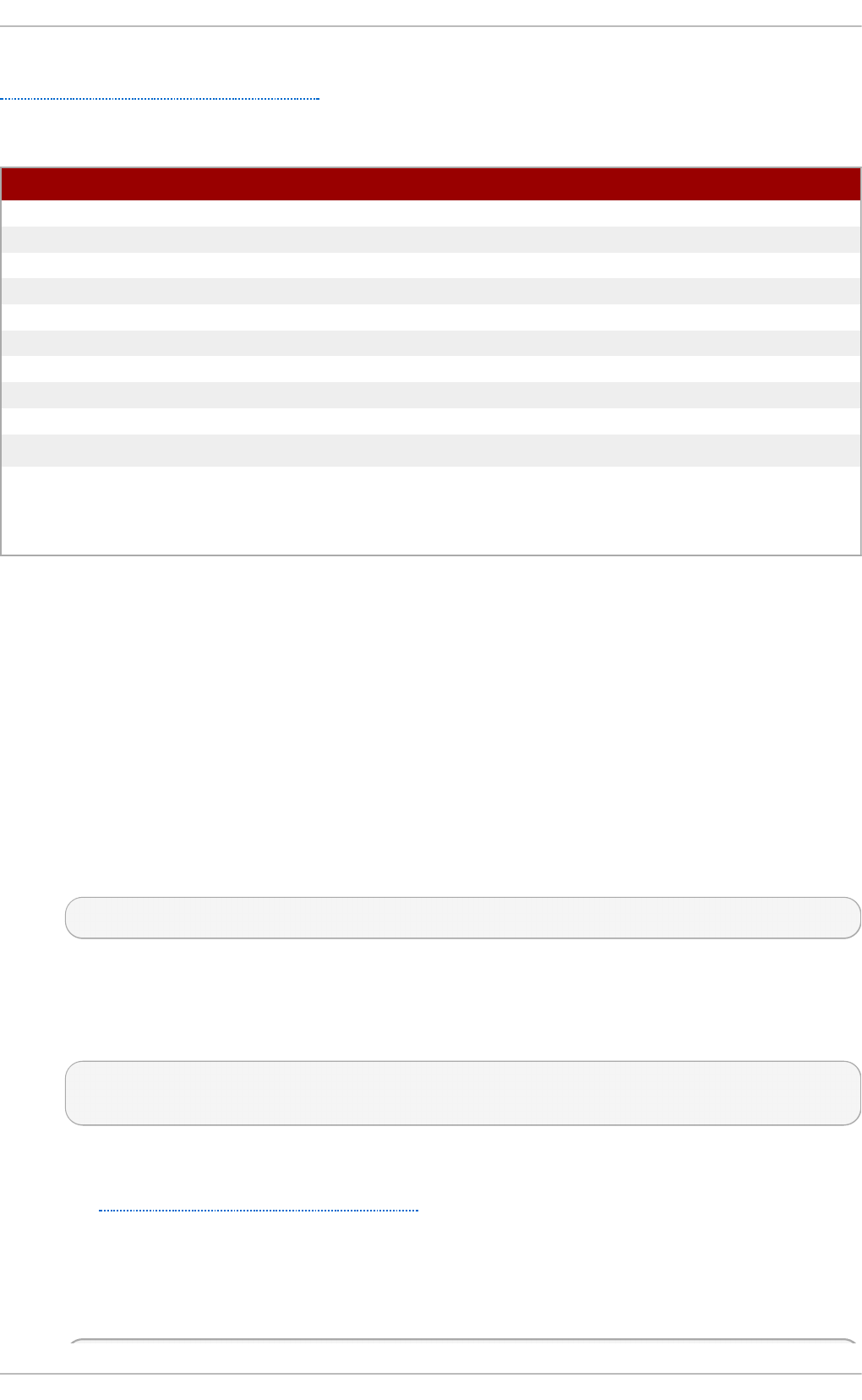

Table of Contents

Part I. Basic Syst em Configurat ion

Chapt er 1 . Syst em Locale and Keyboard Configurat ion

1.1. Setting the System Lo cale

1.2. Chang ing the Keyb o ard Layo ut

1.3. Ad d itio nal Reso urces

Chapt er 2 . Configuring t he Dat e and T ime

2.1. Using the timed atectl Co mmand

2.2. Using the d ate Co mmand

2.3. Using the hwclo ck Co mmand

2.4. Ad d itio nal Reso urces

Chapt er 3. Managing Users and G roups

3.1. Intro d uctio n to Users and Gro up s

3.2. Manag ing Users in a G rap hical Enviro nment

3.3. Using Co mmand -Line To o ls

3.4. Ad d itio nal Reso urces

Chapt er 4 . Access Cont rol List s

4.1. Mo unting File Systems

4.2. Setting Access ACLs

4.3. Setting Default ACLs

4.4. Retrieving ACLs

4.5. Archiving File Systems With ACLs

4.6 . Co mp atib ility with Old er Systems

4.7. ACL References

Chapt er 5. G aining Privileges

5.1. The su Co mmand

5.2. The sud o Co mmand

5.3. Ad d itio nal Reso urces

Part II. Subscript ion and Support

Chapt er 6 . Regist ering t he Syst em and Managing Subscript ions

6 .1. Reg istering the System and Attaching Sub scrip tio ns

6 .2. Manag ing So ftware Rep o sito ries

6 .3. Remo ving Sub scrip tio ns

6 .4. Ad d itio nal Reso urces

Chapt er 7 . Accessing Support Using t he Red Hat Support T ool

7.1. Installing the Red Hat Sup p o rt To o l

7.2. Reg istering the Red Hat Sup p o rt To o l Using the Co mmand Line

7.3. Using the Red Hat Sup p o rt To o l in Interactive Shell Mo d e

7.4. Co nfig uring the Red Hat Sup p o rt To o l

7.5. Op ening and Up d ating Sup p o rt Cases Using Interactive Mo d e

7.6 . Viewing Sup p o rt Cases o n the Co mmand Line

7.7. Ad d itio nal Reso urces

Part III. Inst alling and Managing Soft ware

Chapt er 8 . Yum

8 .1. Checking Fo r and Up d ating Packag es

8 .2. Wo rking with Packag es

6

7

7

9

10

1 2

12

15

17

19

2 1

21

22

24

32

34

34

34

36

36

36

37

37

39

39

40

41

4 3

4 4

44

45

45

46

4 7

47

47

47

47

49

51

51

52

53

53

59

T able of Cont ent s

1

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

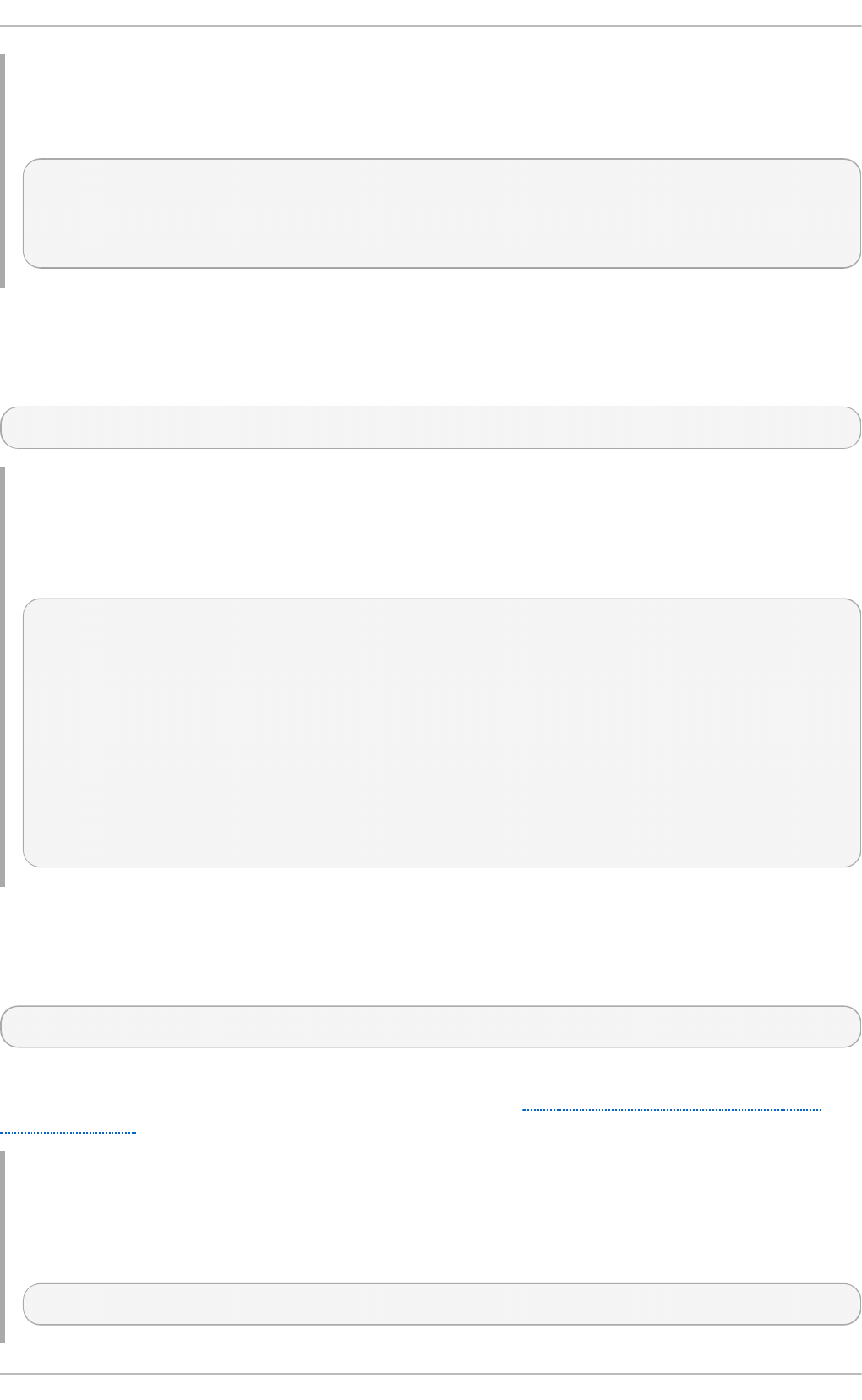

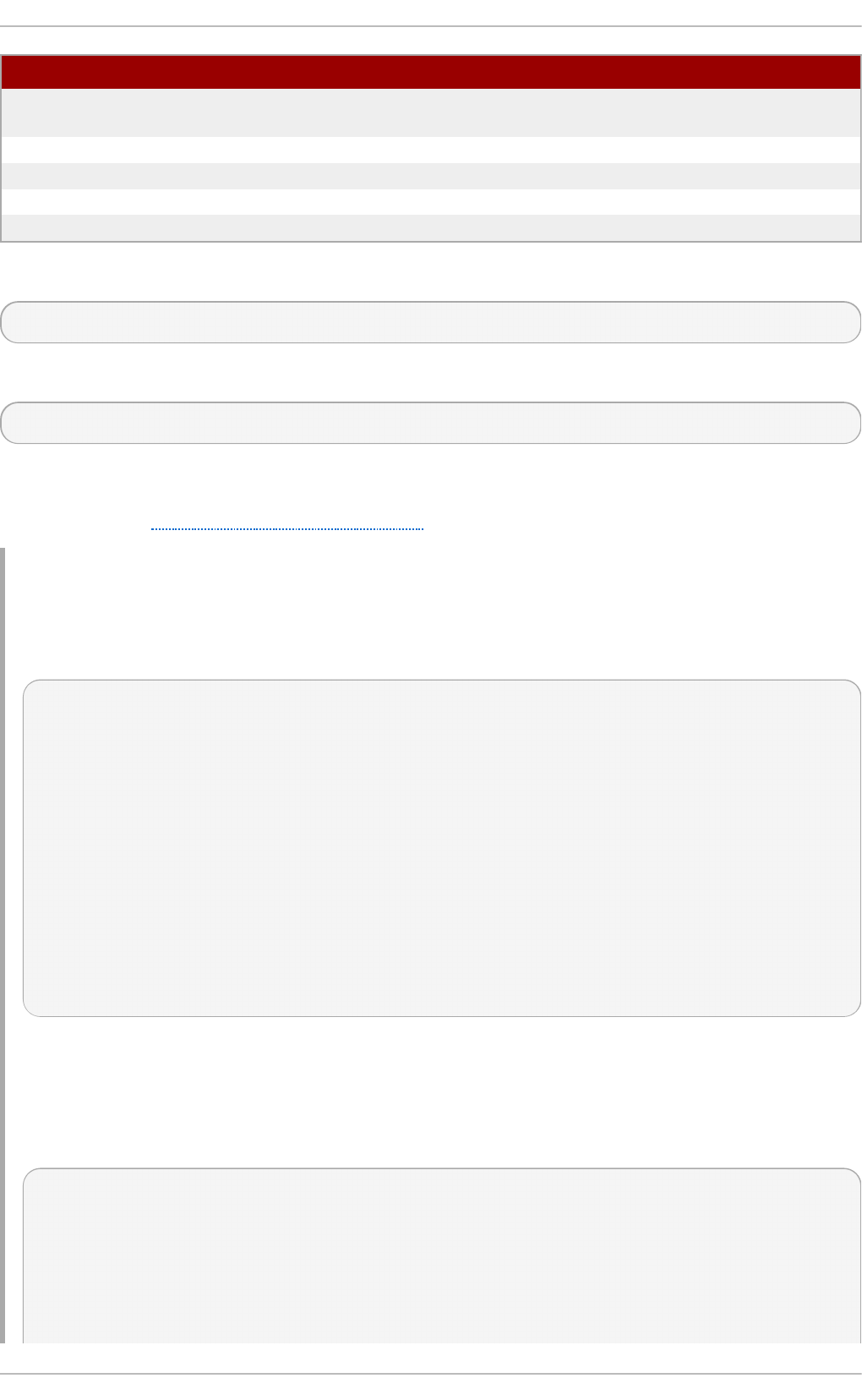

8 .2. Wo rking with Packag es

8 .3. Wo rking with Packag e Gro up s

8 .4. Wo rking with Transactio n Histo ry

8 .5. Co nfig uring Yum and Yum Rep o sito ries

8 .6 . Yum Plug -ins

8 .7. Ad d itio nal Reso urces

Part IV. Infrast ruct ure Services

Chapt er 9 . Managing Services wit h syst emd

9 .1. Intro d uctio n to systemd

9 .2. Manag ing System Services

9 .3. Wo rking with systemd Targ ets

9 .4. Shutting Do wn, Susp end ing , and Hib ernating the System

9 .5. Co ntro lling systemd o n a Remo te Machine

9 .6 . Creating and Mo d ifying systemd Unit Files

9 .7. Ad d itio nal Reso urces

Chapt er 1 0 . OpenSSH

10 .1. The SSH Pro to co l

10 .2. Co nfig uring O p enSSH

10 .3. O p enSSH Clients

10 .4. Mo re Than a Secure Shell

10 .5. Ad d itio nal Reso urces

Chapt er 1 1 . T igerVNC

11.1. VNC Server

11.2. Sharing an Existing Deskto p

11.3. VNC Viewer

11.4. Ad d itio nal Reso urces

Part V. Servers

Chapt er 1 2 . Web Servers

12.1. The Ap ache HTTP Server

Chapt er 1 3. Mail Servers

13.1. Email Pro to co ls

13.2. Email Pro g ram Classificatio ns

13.3. Mail Transp o rt Ag ents

13.4. Mail Delivery Ag ents

13.5. Mail User Ag ents

13.6 . Ad d itio nal Reso urces

Chapt er 1 4 . File and Print Servers

14.1. Samb a

14.2. FTP

14.3. Print Setting s

Chapt er 1 5. Configuring NT P Using t he chrony Suit e

15.1. Intro d uctio n to the chro ny Suite

15.2. Und erstand ing chro ny and Its Co nfig uratio n

15.3. Using chro ny

15.4. Setting Up chro ny fo r Different Enviro nments

15.5. Using chro nyc

15.6 . Ad d itio nal Reso urces

59

6 8

71

78

8 9

9 3

9 4

9 5

9 5

9 7

10 5

10 9

111

112

127

1 30

130

133

141

144

145

147

147

151

152

155

1 56

1 57

157

182

18 2

18 5

18 6

19 8

20 5

20 6

209

20 9

223

229

249

249

250

256

26 1

26 2

26 3

Syst em Administ rat or's Guide

2

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

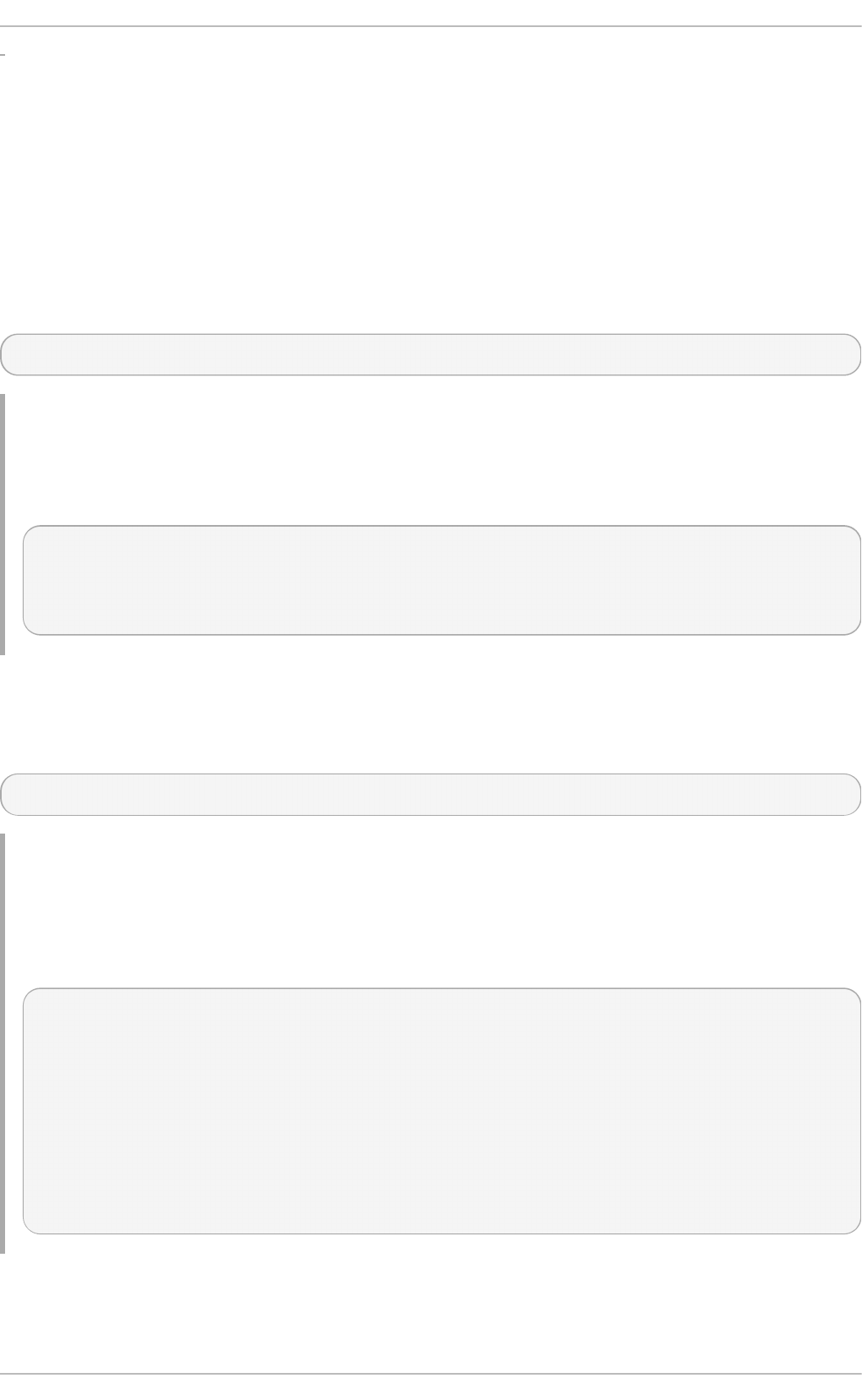

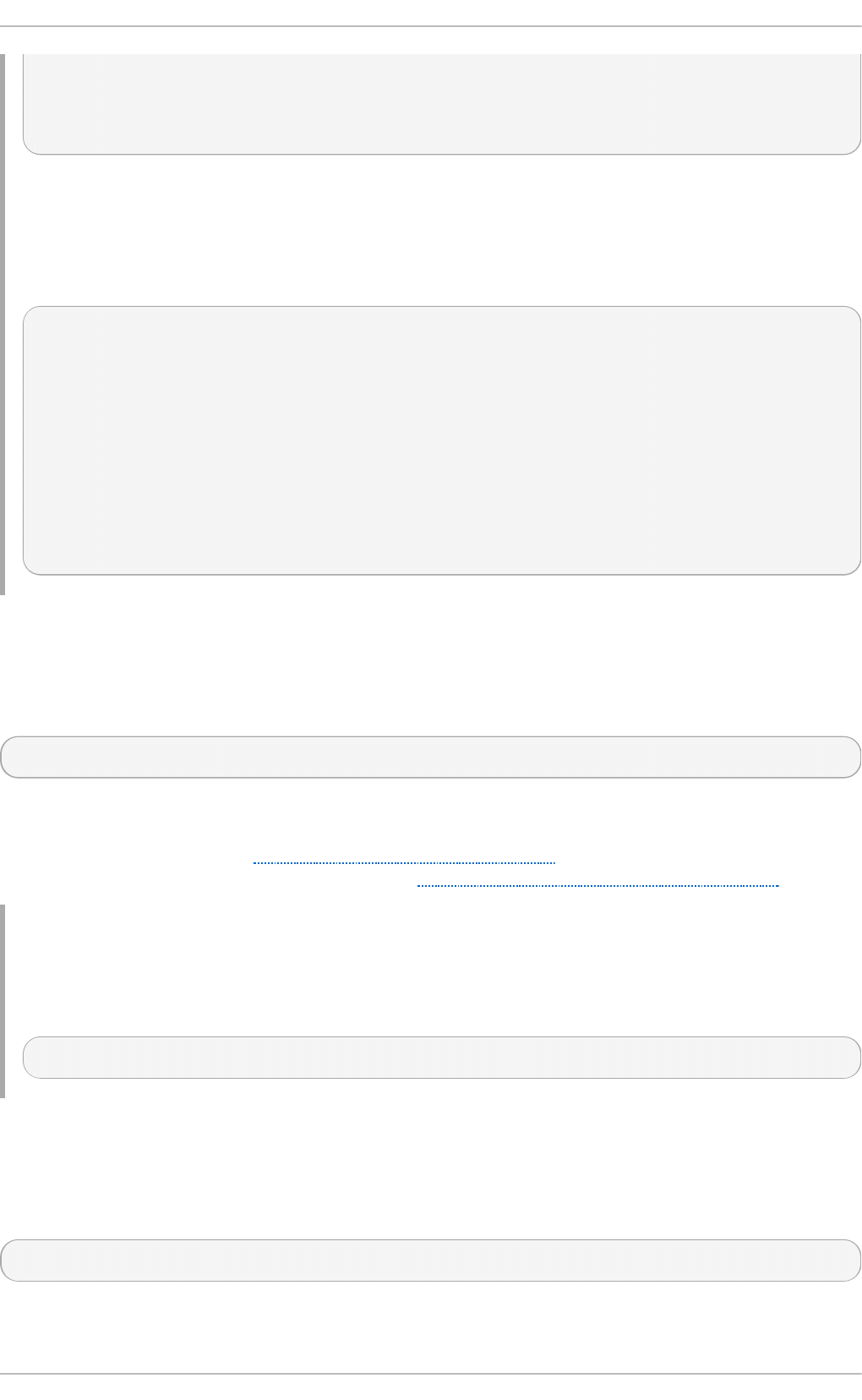

Chapt er 1 6 . Configuring NT P Using nt pd

16 .1. Intro d uctio n to NTP

16 .2. NTP Strata

16 .3. Und erstand ing NTP

16 .4. Und erstand ing the Drift File

16 .5. UTC, Timezo nes, and DST

16 .6 . Authenticatio n Op tio ns fo r NTP

16 .7. Manag ing the Time o n Virtual Machines

16 .8 . Und erstand ing Leap Seco nd s

16 .9 . Und erstand ing the ntp d Co nfig uratio n File

16 .10 . Und erstand ing the ntp d Sysco nfig File

16 .11. Disab ling chro ny

16 .12. Checking if the NTP Daemo n is Installed

16 .13. Installing the NTP Daemo n (ntp d )

16 .14. Checking the Status o f NTP

16 .15. Co nfig ure the Firewall to Allo w Inco ming NTP Packets

16 .16 . Co nfig ure ntp d ate Servers

16 .17. Co nfig ure NTP

16 .18 . Co nfig uring the Hard ware Clo ck Up d ate

16 .19 . Co nfig uring Clo ck So urces

16 .20 . Ad d itio nal Reso urces

Chapt er 1 7 . Configuring PT P Using pt p4 l

17.1. Intro d uctio n to PTP

17.2. Using PTP

17.3. Using PTP with Multip le Interfaces

17.4. Sp ecifying a Co nfig uratio n File

17.5. Using the PTP Manag ement Client

17.6 . Synchro nizing the Clo cks

17.7. Verifying Time Synchro nizatio n

17.8 . Serving PTP Time with NTP

17.9 . Serving NTP Time with PTP

17.10 . Synchro nize to PTP o r NTP Time Using timemaster

17.11. Imp ro ving Accuracy

17.12. Ad d itio nal Reso urces

Part VI. Monit oring and Aut omat ion

Chapt er 1 8 . Syst em Monit oring T ools

18 .1. Viewing System Pro cesses

18 .2. Viewing Memo ry Usag e

18 .3. Viewing CPU Usag e

18 .4. Viewing Blo ck Devices and File Systems

18 .5. Viewing Hard ware Info rmatio n

18 .6 . Checking fo r Hard ware Erro rs

18 .7. Mo nito ring Perfo rmance with Net-SNMP

18 .8 . Ad d itio nal Reso urces

Chapt er 1 9 . OpenLMI

19 .1. Ab o ut Op enLMI

19 .2. Installing Op enLMI

19 .3. Co nfig uring SSL Certificates fo r Op enPeg asus

19 .4. Using LMIShell

19 .5. Using Op enLMI Scrip ts

19 .6 . Ad d itio nal Reso urces

265

26 5

26 5

26 6

26 7

26 7

26 7

26 8

26 8

26 8

270

270

271

271

271

271

272

273

278

279

28 0

282

28 2

28 4

28 7

28 7

28 7

28 8

28 9

29 1

29 2

29 2

29 6

29 6

298

299

29 9

30 2

30 4

30 4

310

312

314

327

32 9

329

330

331

336

374

374

T able of Cont ent s

3

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

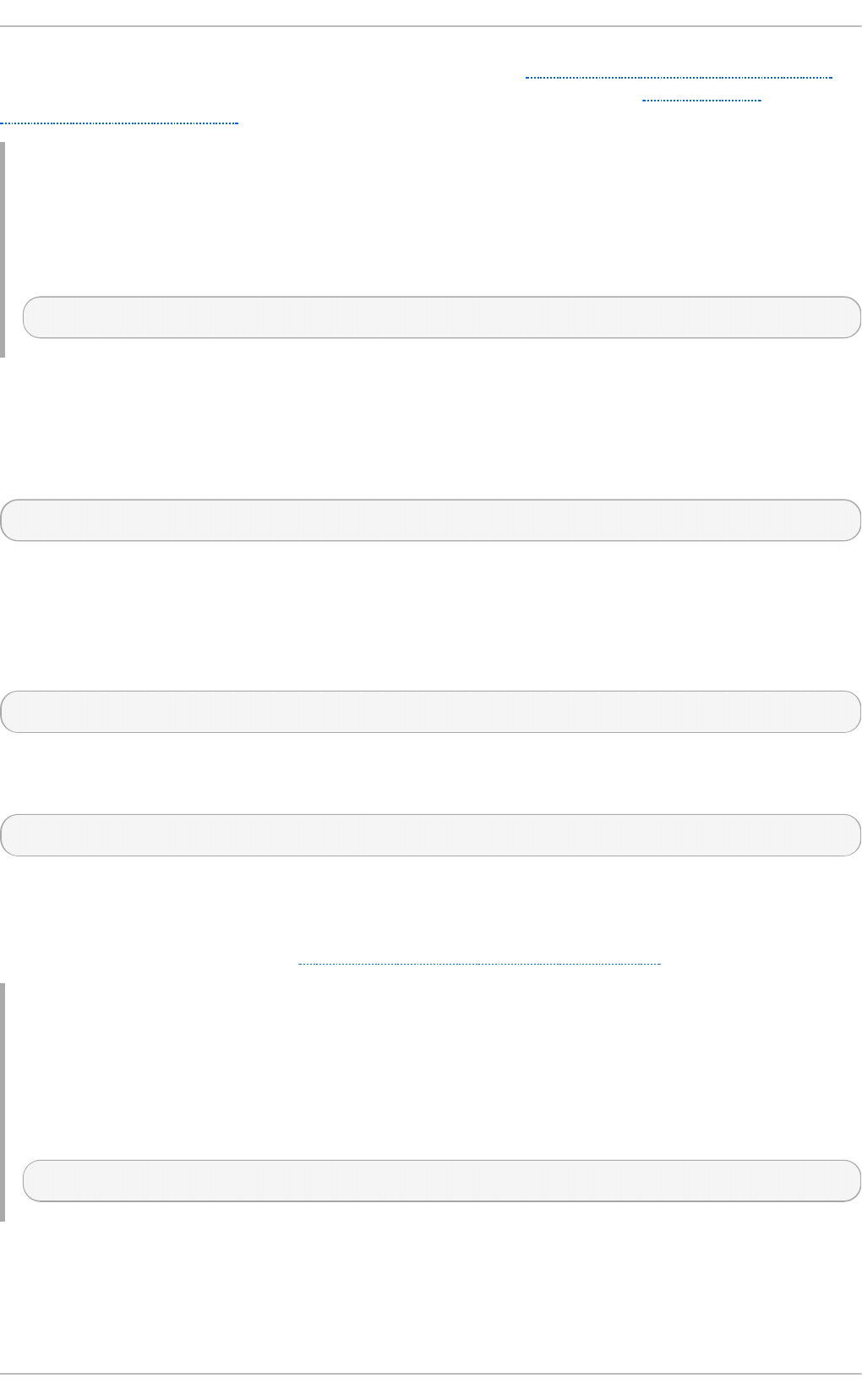

19 .6 . Ad d itio nal Reso urces

Chapt er 2 0 . Viewing and Managing Log Files

20 .1. Lo cating Lo g Files

20 .2. Basic Co nfig uratio n o f Rsyslo g

20 .3. Using the New Co nfig uratio n Fo rmat

20 .4. Wo rking with Queues in Rsyslo g

20 .5. Co nfig uring rsyslo g o n a Lo g g ing Server

20 .6 . Using Rsyslo g Mo d ules

20 .7. Interactio n o f Rsyslo g and Jo urnal

20 .8 . Structured Lo g g ing with Rsyslo g

20 .9 . Deb ug g ing Rsyslo g

20 .10 . Using the Jo urnal

20 .11. Manag ing Lo g Files in a Grap hical Enviro nment

20 .12. Ad d itio nal Reso urces

Chapt er 2 1 . Aut omat ing Syst em T asks

21.1. Cro n and Anacro n

21.2. At and Batch

21.3. Sched uling a Jo b to Run o n Next Bo o t Using a systemd Unit File

21.4. Ad d itio nal Reso urces

Chapt er 2 2 . Aut omat ic Bug Report ing T ool (ABRT )

22.1. Intro d uctio n to ABRT

22.2. Installing ABRT and Starting its Services

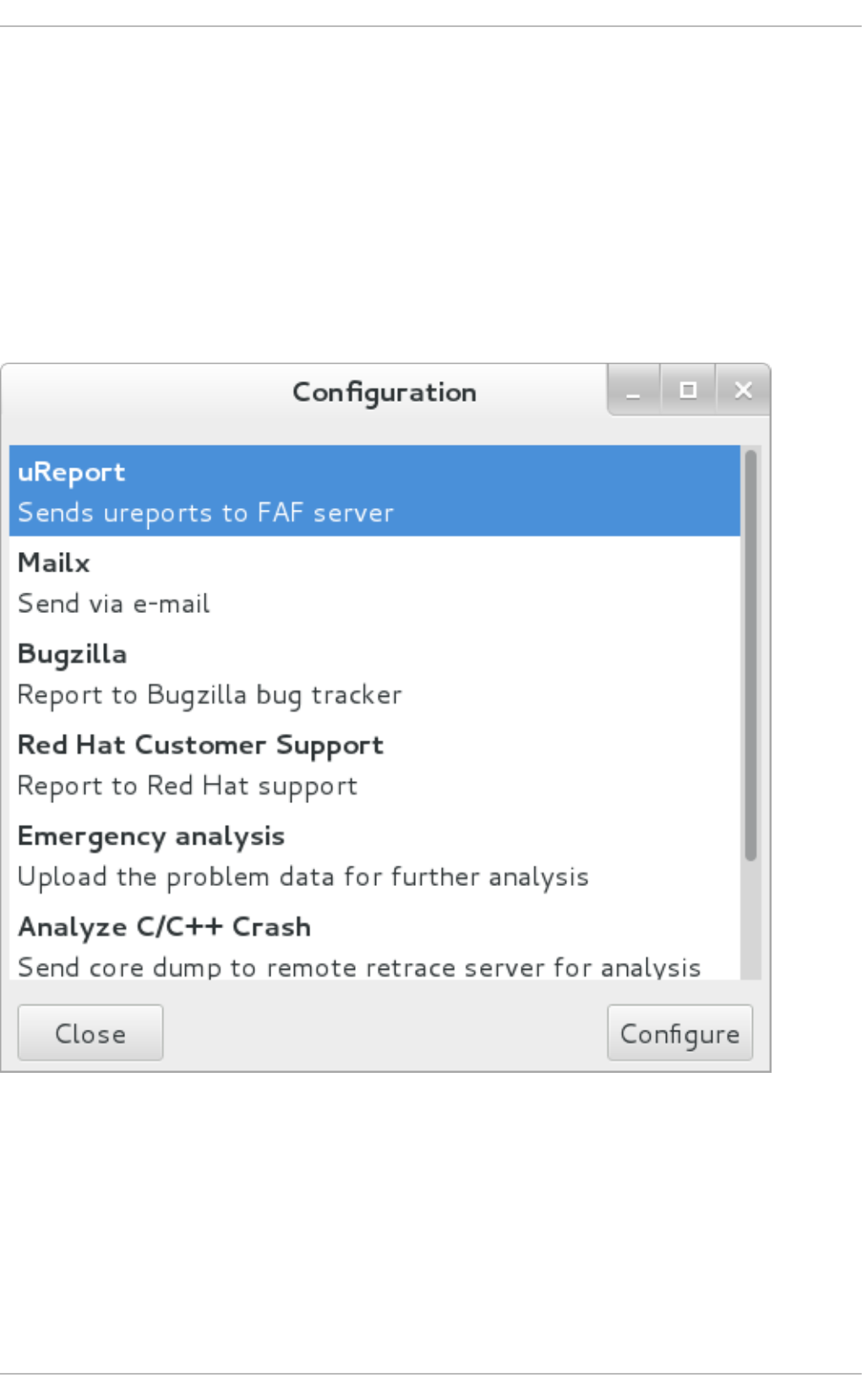

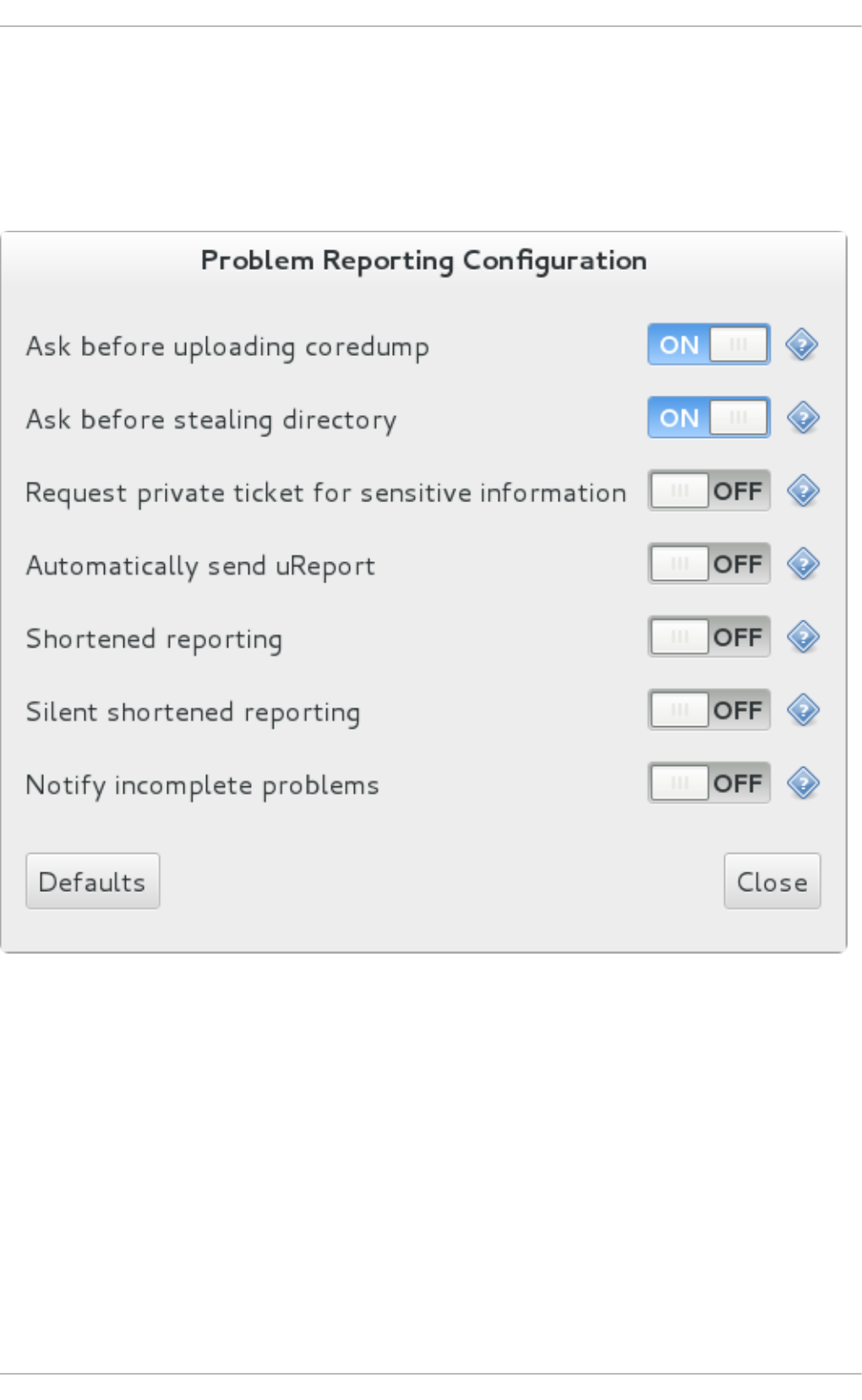

22.3. Co nfig uring ABRT

22.4. Detecting So ftware Pro b lems

22.5. Hand ling Detected Pro b lems

22.6 . Ad d itio nal Reso urces

Chapt er 2 3. O Profile

23.1. Overview o f To o ls

23.2. Using o p erf

23.3. Co nfig uring OPro file Using Leg acy Mo d e

23.4. Starting and Sto p p ing OPro file Using Leg acy Mo d e

23.5. Saving Data in Leg acy Mo d e

23.6 . Analyzing the Data

23.7. Und erstand ing the /d ev/o p ro file/ d irecto ry

23.8 . Examp le Usag e

23.9 . OPro file Sup p o rt fo r Java

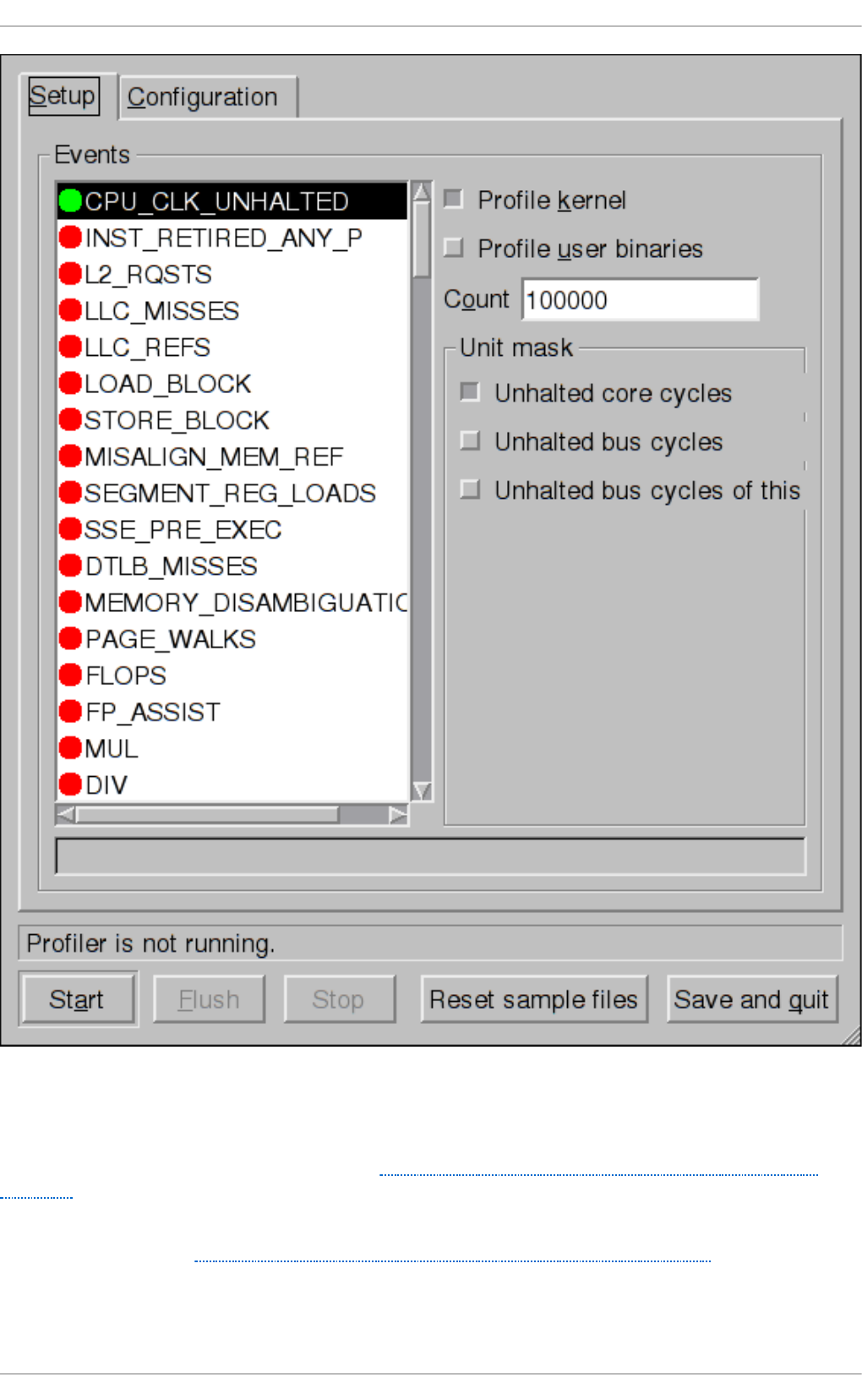

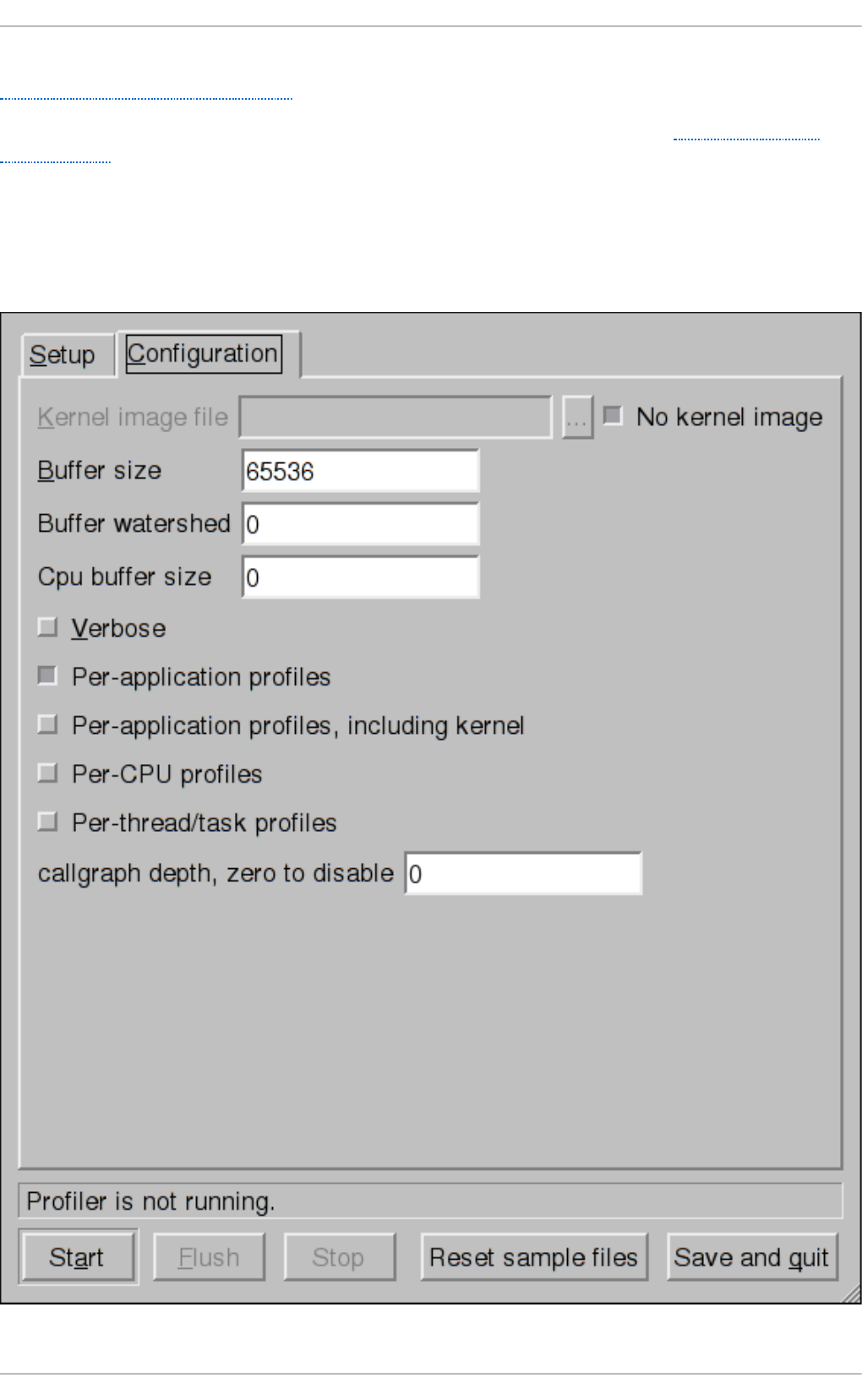

23.10 . Grap hical Interface

23.11. OPro file and SystemTap

23.12. Ad d itio nal Reso urces

Part VII. Kernel, Module and Driver Configurat ion

Chapt er 2 4 . Working wit h t he GRUB 2 Boot Loader

24.1. Intro d uctio n to G RUB 2

24.2. Co nfig uring the G RUB 2 Bo o t Lo ad er

24.3. Making Temp o rary Chang es to a G RUB 2 Menu

24.4. Making Persistent Chang es to a G RUB 2 Menu Using the g rub b y To o l

24.5. Custo mizing the G RUB 2 Co nfig uratio n File

24.6 . Pro tecting GRUB 2 with a Password

24.7. Reinstalling G RUB 2

24.8 . GRUB 2 o ver a Serial Co nso le

374

37 6

376

376

39 0

39 2

40 1

40 5

411

412

415

415

421

426

428

428

433

437

438

440

440

440

442

449

451

453

4 55

455

456

459

46 4

46 5

46 5

470

471

471

472

475

475

477

478

478

479

479

48 0

48 2

48 7

48 8

48 9

Syst em Administ rat or's Guide

4

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

24.9 . Terminal Menu Ed iting During Bo o t

24.10 . Unified Extensib le Firmware Interface (UEFI) Secure Bo o t

24.11. Ad d itio nal Reso urces

Chapt er 2 5. Manually Upgrading t he Kernel

25.1. Overview o f Kernel Packag es

25.2. Prep aring to Up g rad e

25.3. Do wnlo ad ing the Up g rad ed Kernel

25.4. Perfo rming the Up g rad e

25.5. Verifying the Initial RAM Disk Imag e

25.6 . Verifying the Bo o t Lo ad er

Chapt er 2 6 . Working wit h Kernel Modules

26 .1. Listing Currently-Lo ad ed Mo d ules

26 .2. Disp laying Info rmatio n Ab o ut a Mo d ule

26 .3. Lo ad ing a Mo d ule

26 .4. Unlo ad ing a Mo d ule

26 .5. Setting Mo d ule Parameters

26 .6 . Persistent Mo d ule Lo ad ing

26 .7. Installing Mo d ules fro m a Driver Up d ate Disk

26 .8 . Sig ning Kernel Mo d ules fo r Secure Bo o t

26 .9 . Ad d itio nal Reso urces

Part VIII. Syst em Backup and Recovery

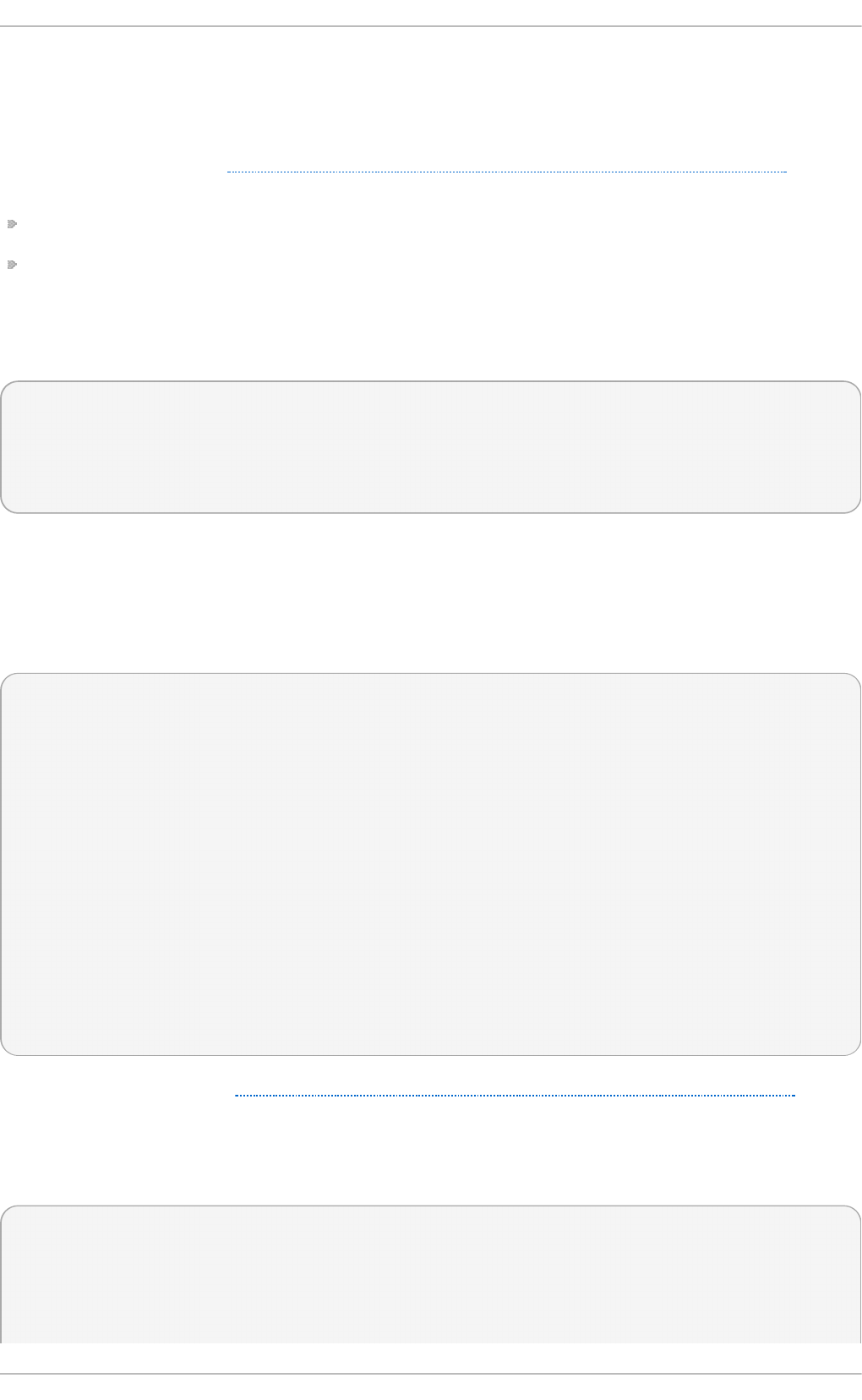

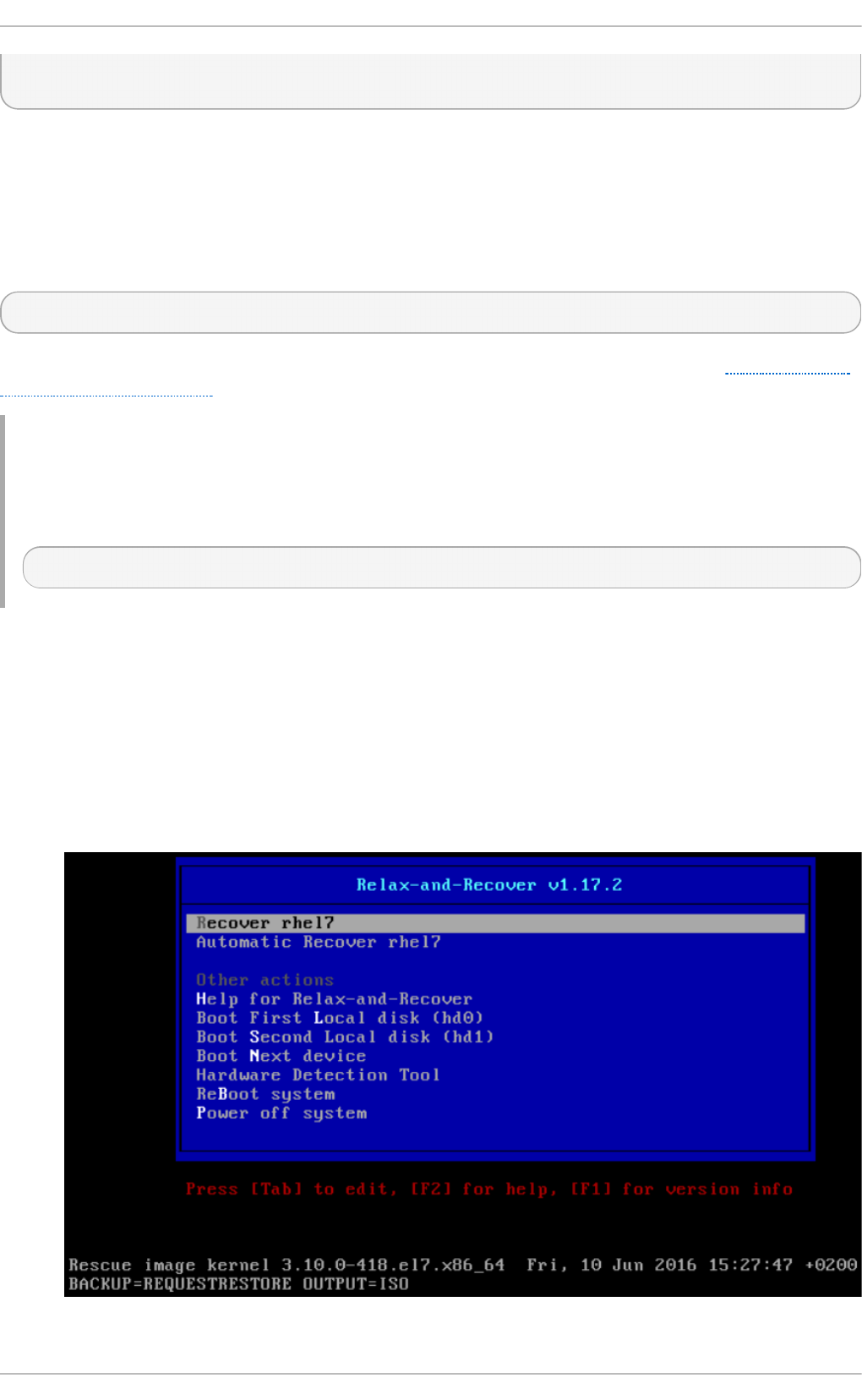

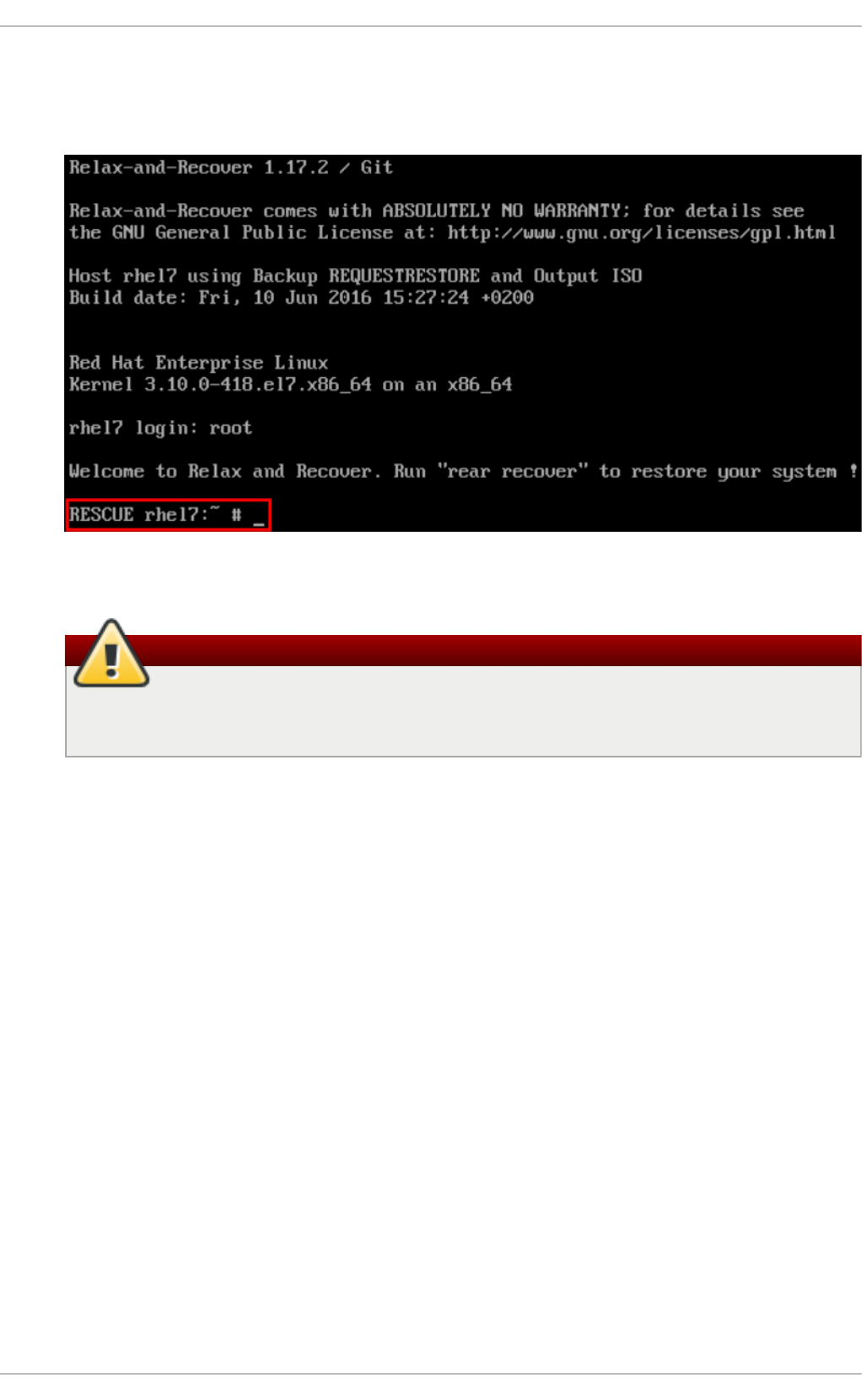

Chapt er 2 7 . Relax- and- Recover (ReaR)

27.1. Basic ReaR Usag e

27.2. Integ rating ReaR with Backup So ftware

Appendix A. RPM

A.1. RPM Desig n Go als

A.2. Using RPM

A.3. Find ing and Verifying RPM Packag es

A.4. Co mmo n Examp les o f RPM Usag e

A.5. Ad d itio nal Reso urces

Appendix B. Revision Hist ory

B.1. Ackno wled g ments

Index

49 1

49 6

49 7

499

49 9

50 0

50 1

50 2

50 2

50 5

50 7

50 7

50 8

511

512

513

514

514

517

523

52 5

52 6

526

532

536

536

537

543

544

545

54 6

546

54 6

T able of Cont ent s

5

Part I. Basic System Configuration

This part covers basic system administration tasks such as keyboard configuration, date and time

configuration, managing users and groups, and gaining privileges.

Syst em Administ rat or's Guide

6

Chapter 1. System Locale and Keyboard Configuration

The system locale specifies the language settings of system services and user interfaces. The

keyboard layout settings control the layout used on the text console and graphical user interfaces.

These settings can be made by modifying the /etc/locale.conf configuration file or by using the

localect l utility. Also, you can use the graphical user interface to perform the task; for a description

of this method, see Red Hat Enterprise Linux 7 Installation Guide.

1.1. Set ting the Syst em Locale

System-wide locale settings are stored in the /etc/locale.conf file, which is read at early boot by

the systemd daemon. The locale settings configured in /etc/locale.conf are inherited by every

service or user, unless individual programs or individual users override them.

The basic file format of /etc/locale.conf is a newline-separated list of variable assignments. For

example, German locale with English messages in /etc/locale.conf looks as follows:

LANG=de_DE.UTF-8

LC_MESSAGES=C

Here, the LC_MESSAGES option determines the locale used for diagnostic messages written to the

standard error output. To further specify locale settings in /etc/locale.conf, you can use

several other options, the most relevant are summarized in Table 1.1, “Options configurable in

/etc/locale.conf”. See the locale(7) manual page for detailed information on these options. Note

that the LC_ALL option, which represents all possible options, should not be configured in

/etc/locale.conf.

Tab le 1.1. O pt ions co nf igurable in /et c/locale.con f

Opt ion Description

LANG Provides a default value for the system locale.

LC_COLLATE Changes the behavior of functions which

compare strings in the local alphabet.

LC_CTYPE Changes the behavior of the character handling

and classification functions and the multibyte

character functions.

LC_NUMERIC Describes the way numbers are usually printed,

with details such as decimal point versus

decimal comma.

LC_TIME Changes the display of the current time, 24-hour

versus 12-hour clock.

LC_MESSAGES Determines the locale used for diagnostic

messages written to the standard error output.

1.1.1. Displaying t he Current Stat us

The l o cal ectl command can be used to query and change the system locale and keyboard layout

settings. To show the current settings, use the status option:

l o cal ectl status

Chapt er 1 . Syst em Locale and Keyboard Configurat ion

7

Example 1.1. Disp laying t he Current St atus

The output of the previous command lists the currently set locale, keyboard layout configured for

the console and for the X11 window system.

~]$ l o cal ectl status

System Locale: LANG=en_US.UTF-8

VC Keymap: us

X11 Layout: n/a

1.1.2. Listing Available Locales

To list all locales available for your system, type:

l o cal ectl l i st-l o cal es

Example 1.2. Listin g Lo cales

Imagine you want to select a specific English locale, but you are not sure if it is available on the

system. You can check that by listing all English locales with the following command:

~]$ l o cal ectl l i st-l o cal es | g rep en_

en_AG

en_AG.utf8

en_AU

en_AU.iso88591

en_AU.utf8

en_BW

en_BW.iso88591

en_BW.utf8

output truncated

1.1.3. Set t ing the Locale

To set the default system locale, use the following command as ro o t:

l o cal ectl set-locale LANG=locale

Replace locale with the locale name, found with the l o cal ectl l i st-l o cal es command. The

above syntax can also be used to configure parameters from Table 1.1, “ Options configurable in

/etc/locale.conf”.

Example 1.3. Changing th e Default Locale

For example, if you want to set British English as your default locale, first find the name of this

locale by using l i st-l o cal es. Then, as ro o t, type the command in the following form:

~]# l o cal ectl set-locale LANG=en_GB.utf8

Syst em Administ rat or's Guide

8

1.2. Changing the Keyboard Layout

The keyboard layout settings enable the user to control the layout used on the text console and

graphical user interfaces.

1.2.1. Displaying t he Current Set t ings

As mentioned before, you can check your current keyboard layout configuration with the following

command:

l o cal ectl status

Example 1.4 . Displaying th e Keyboard Set ting s

In the following output, you can see the keyboard layout configured for the virtual console and for

the X11 window system.

~]$ l o cal ectl status

System Locale: LANG=en_US.utf8

VC Keymap: us

X11 Layout: us

1.2.2. Listing Available Keymaps

To list all available keyboard layouts that can be configured on your system, type:

l o cal ectl list-keymaps

Example 1.5. Search ing for a Particular Keymap

You can use g rep to search the output of the previous command for a specific keymap name.

There are often multiple keymaps compatible with your currently set locale. For example, to find

available Czech keyboard layouts, type:

~]$ l o cal ectl list-keymaps | g rep cz

cz

cz-cp1250

cz-lat2

cz-lat2-prog

cz-qwerty

cz-us-qwertz

sunt5-cz-us

sunt5-us-cz

1.2.3. Set t ing the Keymap

Chapt er 1 . Syst em Locale and Keyboard Configurat ion

9

To set the default keyboard layout for your system, use the following command as ro o t:

l o cal ectl set-keymap map

Replace map with the name of the keymap taken from the output of the l o cal ectl list-keymaps

command. Unless the --no-convert option is passed, the selected setting is also applied to the

default keyboard mapping of the X11 window system, after converting it to the closest matching X11

keyboard mapping. This also applies in reverse, you can specify both keymaps with the following

command as ro o t:

l o cal ectl set-x11-keymap map

If you want your X11 layout to differ from the console layout, use the --no-convert option.

l o cal ectl --no-convert set-x11-keymap map

With this option, the X11 keymap is specified without changing the previous console layout setting.

Example 1.6 . Set ting th e X11 Keymap Separately

Imagine you want to use German keyboard layout in the graphical interface, but for console

operations you want to retain the US keymap. To do so, type as ro o t:

~]# l o cal ectl --no-convert set-x11-keymap de

Then you can verify if your setting was successful by checking the current status:

~]$ l o cal ectl status

System Locale: LANG=de_DE.UTF-8

VC Keymap: us

X11 Layout: de

Apart from keyboard layout (map), three other options can be specified:

l o cal ectl set-x11-keymap map model variant options

Replace model with the keyboard model name, variant and options with keyboard variant and option

components, which can be used to enhance the keyboard behavior. These options are not set by

default. For more information on X11 Model, X11 Variant, and X11 Options see the kbd(4) man

page.

1.3. Additional Resources

For more information on how to configure the keyboard layout on Red Hat Enterprise Linux, see the

resources listed below:

Inst alled Document at ion

l o cal ectl (1) — The manual page for the l o cal ectl command line utility documents how to

use this tool to configure the system locale and keyboard layout.

Syst em Administ rat or's Guide

10

loadkeys(1) — The manual page for the loadkeys command provides more information on

how to use this tool to change the keyboard layout in a virtual console.

See Also

Chapter 5, Gaining Privileges documents how to gain administrative privileges by using the su and

sud o commands.

Chapter 9, Managing Services with systemd provides more information on systemd and documents

how to use the systemctl command to manage system services.

Chapt er 1 . Syst em Locale and Keyboard Configurat ion

11

Chapter 2. Configuring the Date and Time

Modern operating systems distinguish between the following two types of clocks:

A real-time clock (RTC), commonly referred to as a hardware clock, (typically an integrated circuit on

the system board) that is completely independent of the current state of the operating system and

runs even when the computer is shut down.

A system clock, also known as a software clock, that is maintained by the kernel and its initial

value is based on the real-time clock. Once the system is booted and the system clock is

initialized, the system clock is completely independent of the real-time clock.

The system time is always kept in Coordinated Universal Time (UTC) and converted in applications to

local time as needed. Local time is the actual time in your current time zone, taking into account

daylight saving time (DST). The real-time clock can use either UTC or local time. UTC is recommended.

Red Hat Enterprise Linux 7 offers three command line tools that can be used to configure and display

information about the system date and time: the ti med atectl utility, which is new in Red Hat

Enterprise Linux 7 and is part of systemd; the traditional d ate command; and the hwclock utility

for accessing the hardware clock.

2.1. Using t he ti med atectl Command

The timed atectl utility is distributed as part of the systemd system and service manager and

allows you to review and change the configuration of the system clock. You can use this tool to

change the current date and time, set the time zone, or enable automatic synchronization of the

system clock with a remote server.

For information on how to display the current date and time in a custom format, see also Section 2.2,

“Using the date Command”.

2.1.1. Displaying t he Current Dat e and T ime

To display the current date and time along with detailed information about the configuration of the

system and hardware clock, run the ti med atectl command with no additional command line

options:

ti med atectl

This displays the local and universal time, the currently used time zone, the status of the Network

Time Protocol (NTP) configuration, and additional information related to DST.

Example 2.1. Disp laying t he Current Date and Time

The following is an example output of the ti med atectl command on a system that does not use

NTP to synchronize the system clock with a remote server:

~]$ ti med atectl

Local time: Mon 2013-09-16 19:30:24 CEST

Universal time: Mon 2013-09-16 17:30:24 UTC

Timezone: Europe/Prague (CEST, +0200)

NTP enabled: no

NTP synchronized: no

RTC in local TZ: no

Syst em Administ rat or's Guide

12

DST active: yes

Last DST change: DST began at

Sun 2013-03-31 01:59:59 CET

Sun 2013-03-31 03:00:00 CEST

Next DST change: DST ends (the clock jumps one hour backwards) at

Sun 2013-10-27 02:59:59 CEST

Sun 2013-10-27 02:00:00 CET

Important

Changes to the status of chrony or ntpd will not be immediately noticed by ti med atectl . If

changes to the configuration or status of these tools is made, enter the following command:

~]# systemctl restart systemd-timedated.services

2.1.2. Changing t he Current T ime

To change the current time, type the following at a shell prompt as ro o t:

ti med atectl set-time HH:MM:SS

Replace HH with an hour, MM with a minute, and SS with a second, all typed in two-digit form.

This command updates both the system time and the hardware clock. The result it is similar to using

both the date --set and hwclock --systohc commands.

The command will fail if an NTP service is enabled. See Section 2.1.5, “Synchronizing the System

Clock with a Remote Server” to temporally disable the service.

Example 2.2. Changing th e Curren t Time

To change the current time to 11:26 p.m., run the following command as ro o t:

~]# ti med atectl set-ti me 23: 26 : 0 0

By default, the system is configured to use UTC. To configure your system to maintain the clock in the

local time, run the ti med atectl command with the set-local-rtc option as ro o t:

ti med atectl set-local-rtc boolean

To configure your system to maintain the clock in the local time, replace boolean with yes (or,

alternatively, y, true, t, or 1). To configure the system to use UTC, replace boolean with no (or,

alternatively, n, false, f, or 0). The default option is no .

2.1.3. Changing t he Current Dat e

To change the current date, type the following at a shell prompt as ro o t:

Chapt er 2 . Configuring t he Dat e and T ime

13

ti med atectl set-time YYYY-MM-DD

Replace YYYY with a four-digit year, MM with a two-digit month, and DD with a two-digit day of the

month.

Note that changing the date without specifying the current time results in setting the time to 00:00:00.

Example 2.3. Changing th e Curren t Dat e

To change the current date to 2 June 2013 and keep the current time (11:26 p.m.), run the

following command as ro o t:

~]# ti med atectl set-ti me ' 20 13-0 6 -0 2 23: 26 : 0 0 '

2.1.4 . Changing t he T ime Zone

To list all available time zones, type the following at a shell prompt:

ti med atectl l i st-ti mezo nes

To change the currently used time zone, type as ro o t:

ti med atectl set-timezone time_zone

Replace time_zone with any of the values listed by the timedatectl list-timezones command.

Example 2.4 . Changing the Time Z on e

To identify which time zone is closest to your present location, use the ti med atectl command

with the list-timezones command line option. For example, to list all available time zones in

Europe, type:

~]# timedatectl list-timezones | grep Europe

Europe/Amsterdam

Europe/Andorra

Europe/Athens

Europe/Belgrade

Europe/Berlin

Europe/Bratislava

…

To change the time zone to Europe/Prague, type as ro o t:

~]# timedatectl set-timezone Europe/Prague

2.1.5. Synchronizing the Syst em Clock wit h a Remot e Server

Syst em Administ rat or's Guide

14

As opposed to the manual adjustments described in the previous sections, the ti med atectl

command also allows you to enable automatic synchronization of your system clock with a group of

remote servers using the NTP protocol. Enabling NTP enables the chronyd or ntpd service,

depending on which of them is installed.

The NTP service can be enabled and disabled using a command as follows:

ti med atectl set-ntp boolean

To enable your system to synchronize the system clock with a remote NTP server, replace boolean

with yes (the default option). To disable this feature, replace boolean with no .

Example 2.5. Synchroniz ing th e System Clock wit h a Remote Server

To enable automatic synchronization of the system clock with a remote server, type:

~]# timedatectl set-ntp yes

The command will fail if an NTP service is not installed. See Section 15.3.1, “Installing chrony” for

more information.

2.2. Using t he dat e Command

The d ate utility is available on all Linux systems and allows you to display and configure the current

date and time. It is frequently used in scripts to display detailed information about the system clock in

a custom format.

For information on how to change the time zone or enable automatic synchronization of the system

clock with a remote server, see Section 2.1, “Using the ti med atectl Command”.

2.2.1. Displaying t he Current Dat e and T ime

To display the current date and time, run the d ate command with no additional command line

options:

d ate

This displays the day of the week followed by the current date, local time, abbreviated time zone, and

year.

By default, the d ate command displays the local time. To display the time in UTC, run the command

with the --utc or -u command line option:

d ate --utc

You can also customize the format of the displayed information by providing the + "format" option

on the command line:

d ate + "format"

Chapt er 2 . Configuring t he Dat e and T ime

15

Replace format with one or more supported control sequences as illustrated in Example 2.6,

“Displaying the Current Date and Time”. See Table 2.1, “Commonly Used Control Sequences” for a

list of the most frequently used formatting options, or the d ate(1) manual page for a complete list of

these options.

Tab le 2.1. Co mmonly Used Con trol Sequen ces

Co nt rol Sequ ence Description

%H The hour in the HH format (for example, 17).

%M The minute in the MM format (for example, 30 ).

%S The second in the SS format (for example, 24 ).

%d The day of the month in the DD format (for example, 16 ).

%m The month in the MM format (for example, 09).

%Y The year in the YYYY format (for example, 20 13).

%Z The time zone abbreviation (for example, C EST ).

%F The full date in the YYYY-MM-DD format (for example, 20 13-0 9 -16 ). This

option is equal to %Y-%m-%d.

%T The full time in the HH:MM:SS format (for example, 17:30:24). This option

is equal to %H:%M:%S

Example 2.6 . Displaying th e Curren t Dat e and Time

To display the current date and local time, type the following at a shell prompt:

~]$ d ate

Mon Sep 16 17:30:24 CEST 2013

To display the current date and time in UTC, type the following at a shell prompt:

~]$ date --utc

Mon Sep 16 15:30:34 UTC 2013

To customize the output of the d ate command, type:

~]$ date +"%Y-%m-%d %H:%M"

2013-09-16 17:30

2.2.2. Changing t he Current T ime

To change the current time, run the d ate command with the --set or -s option as ro o t:

d ate --set HH:MM:SS

Replace HH with an hour, MM with a minute, and SS with a second, all typed in two-digit form.

By default, the d ate command sets the system clock to the local time. To set the system clock in UTC,

run the command with the --utc or -u command line option:

d ate --set HH:MM:SS --utc

Syst em Administ rat or's Guide

16

Example 2.7. Changing th e Curren t Time

To change the current time to 11:26 p.m., run the following command as ro o t:

~]# date --set 23:26:00

2.2.3. Changing t he Current Dat e

To change the current date, run the d ate command with the --set or -s option as ro o t:

d ate --set YYYY-MM-DD

Replace YYYY with a four-digit year, MM with a two-digit month, and DD with a two-digit day of the

month.

Note that changing the date without specifying the current time results in setting the time to 00:00:00.

Example 2.8. Changing th e Curren t Dat e

To change the current date to 2 June 2013 and keep the current time (11:26 p.m.), run the

following command as ro o t:

~]# date --set 2013-06-02 23:26:00

2.3. Using the hwclock Command

hwclock is a utility for accessing the hardware clock, also referred to as the Real Time Clock (RTC).

The hardware clock is independent of the operating system you use and works even when the

machine is shut down. This utility is used for displaying the time from the hardware clock. hwclock

also contains facilities for compensating for systematic drift in the hardware clock.

The hardware clock stores the values of: year, month, day, hour, minute, and second. It is not able to

store the time standard, local time or Coordinated Universal Time (UTC), nor set the Daylight Saving

Time (DST).

The hwclock utility saves its settings in the /etc/adjtime file, which is created with the first

change you make, for example, when you set the time manually or synchronize the hardware clock

with the system time.

Note

In Red Hat Enterprise Linux 6, the hwclock command was run automatically on every system

shutdown or reboot, but it is not in Red Hat Enterprise Linux 7. When the system clock is

synchronized by the Network Time Protocol (NTP) or Precision Time Protocol (PTP), the kernel

automatically synchronizes the hardware clock to the system clock every 11 minutes.

Chapt er 2 . Configuring t he Dat e and T ime

17

For details about NTP, see Chapter 15, Configuring NTP Using the chrony Suite and Chapter 16,

Configuring NTP Using ntpd. For information about PTP, see Chapter 17, Configuring PTP Using ptp4l.

For information about setting the hardware clock after executing n tp date, see Section 16.18,

“Configuring the Hardware Clock Update”.

2.3.1. Displaying t he Current Dat e and T ime

Running hwclock with no command line options as the ro o t user returns the date and time in local

time to standard output.

hwclock

Note that using the --utc or --localtime options with the hwclock command does not mean

you are displaying the hardware clock time in UTC or local time. These options are used for setting

the hardware clock to keep time in either of them. The time is always displayed in local time.

Additionally, using the hwclock --utc or hwclock --local commands does not change the

record in the /etc/adjtime file. This command can be useful when you know that the setting saved

in /etc/adjtime is incorrect but you do not want to change the setting. On the other hand, you

may receive misleading information if you use the command an incorrect way. See the hwclock(8)

manual page for more details.

Example 2.9 . Displaying th e Curren t Dat e and Time

To display the current date and the current local time from the hardware clock, run as ro o t:

~]# hwclock

Tue 15 Apr 2014 04:23:46 PM CEST -0.329272 seconds

CEST is a time zone abbreviation and stands for Central European Summer Time.

For information on how to change the time zone, see Section 2.1.4, “Changing the Time Zone” .

2.3.2. Set t ing the Date and T ime

Besides displaying the date and time, you can manually set the hardware clock to a specific time.

When you need to change the hardware clock date and time, you can do so by appending the --set

and --date options along with your specification:

hwclock --set --date "dd mmm yyyy HH:MM"

Replace dd with a day (a two-digit number), mmm with a month (a three-letter abbreviation), yyyy with

a year (a four-digit number), HH with an hour (a two-digit number), MM with a minute (a two-digit

number).

At the same time, you can also set the hardware clock to keep the time in either UTC or local time by

adding the --utc or --localtime options, respectively. In this case, UTC or LOCAL is recorded in

the /etc/adjtime file.

Example 2.10. Sett in g t he Hardware Clock to a Specific Date and Time

Syst em Administ rat or's Guide

18

If you want to set the date and time to a specific value, for example, to "21:17, October 21, 2014",

and keep the hardware clock in UTC, run the command as ro o t in the following format:

~]# hwclock --set --date "21 Oct 2014 21:17" --utc

2.3.3. Synchronizing the Dat e and T ime

You can synchronize the hardware clock and the current system time in both directions.

Either you can set the hardware clock to the current system time by using this command:

hwclock --systohc

Note that if you use NTP, the hardware clock is automatically synchronized to the system clock

every 11 minutes, and this command is useful only at boot time to get a reasonable initial system

time.

Or, you can set the system time from the hardware clock by using the following command:

hwclock --hctosys

When you synchronize the hardware clock and the system time, you can also specify whether you

want to keep the hardware clock in local time or UTC by adding the --utc or --localtime option.

Similarly to using --set, UTC or LOCAL is recorded in the /etc/adjtime file.

The hwclock --systohc --utc command is functionally similar to ti med atectl set-l o cal -

rtc false and the hwclock --systohc --local command is an alternative to ti med atectl

set-local-rtc true.

Example 2.11. Synchroniz ing the Hard ware Clo ck with Syst em Time

To set the hardware clock to the current system time and keep the hardware clock in local time, run

the following command as ro o t:

~]# hwclock --systohc --localtime

To avoid problems with time zone and DST switching, it is recommended to keep the hardware

clock in UTC. The shown Example 2.11, “Synchronizing the Hardware Clock with System Time” is

useful, for example, in case of a multi boot with a Windows system, which assumes the hardware

clock runs in local time by default, and all other systems need to accommodate to it by using local

time as well. It may also be needed with a virtual machine; if the virtual hardware clock provided by

the host is running in local time, the guest system needs to be configured to use local time, too.

2.4. Additional Resources

For more information on how to configure the date and time in Red Hat Enterprise Linux 7, see the

resources listed below.

Inst alled Document at ion

Chapt er 2 . Configuring t he Dat e and T ime

19

ti med atectl (1) — The manual page for the ti med atectl command line utility documents how

to use this tool to query and change the system clock and its settings.

d ate(1) — The manual page for the d ate command provides a complete list of supported

command line options.

hwclock(8) — The manual page for the hwclock command provides a complete list of

supported command line options.

See Also

Chapter 1, System Locale and Keyboard Configuration documents how to configure the keyboard

layout.

Chapter 5, Gaining Privileges documents how to gain administrative privileges by using the su and

sud o commands.

Chapter 9, Managing Services with systemd provides more information on systemd and documents

how to use the systemctl command to manage system services.

Syst em Administ rat or's Guide

20

Chapter 3. Managing Users and Groups

The control of users and groups is a core element of Red Hat Enterprise Linux system administration.

This chapter explains how to add, manage, and delete users and groups in the graphical user

interface and on the command line, and covers advanced topics, such as creating group directories.

3.1. Int roduct ion to Users and Groups

While users can be either people (meaning accounts tied to physical users) or accounts that exist for

specific applications to use, groups are logical expressions of organization, tying users together for

a common purpose. Users within a group share the same permissions to read, write, or execute files

owned by that group.

Each user is associated with a unique numerical identification number called a user ID (UID).

Likewise, each group is associated with a group ID (GID). A user who creates a file is also the owner

and group owner of that file. The file is assigned separate read, write, and execute permissions for

the owner, the group, and everyone else. The file owner can be changed only by ro o t, and access

permissions can be changed by both the ro o t user and file owner.

Additionally, Red Hat Enterprise Linux supports access control lists (ACLs) for files and directories

which allow permissions for specific users outside of the owner to be set. For more information about

this feature, see Chapter 4, Access Control Lists.

Reserved User and Gro up IDs

Red Hat Enterprise Linux reserves user and group IDs below 1000 for system users and groups. By

default, the User Man ager does not display the system users. Reserved user and group IDs are

documented in the setup package. To view the documentation, use this command:

cat /usr/share/doc/setup*/uidgid

The recommended practice is to assign non-reserved IDs starting at 5,000, as the reserved range

can increase in the future. To make the IDs assigned to new users by default start at 5,000, change

the UID_MIN and GID_MIN directives in the /etc/login.defs file:

[file contents truncated]

UID_MIN 5000

[file contents truncated]

GID_MIN 5000

[file contents truncated]

Note

For users created before you changed UID_MIN and GID_MIN directives, UIDs will still start

at the default 1000.

Even with new user and group IDs beginning with 5,000, it is recommended not to raise IDs reserved

by the system above 1000 to avoid conflict with systems that retain the 1000 limit.

3.1.1. User Privat e Groups

Chapt er 3. Managing Users and G roups

21

Red Hat Enterprise Linux uses a user private group (UPG) scheme, which makes UNIX groups easier to

manage. A user private group is created whenever a new user is added to the system. It has the same

name as the user for which it was created and that user is the only member of the user private group.

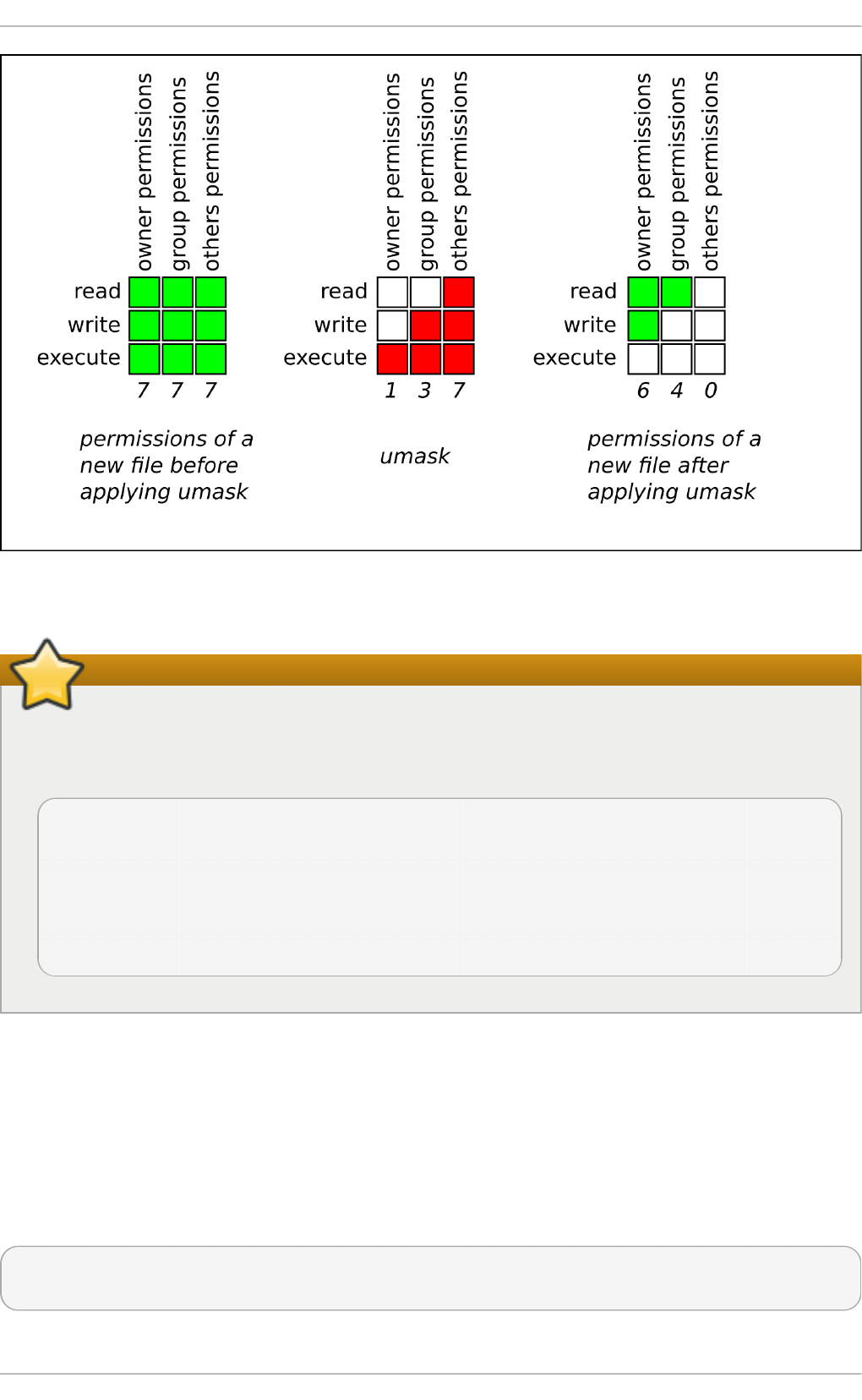

User private groups make it safe to set default permissions for a newly created file or directory,

allowing both the user and the group of that user to make modifications to the file or directory.

The setting which determines what permissions are applied to a newly created file or directory is

called a umask and is configured in the /etc/bashrc file. Traditionally on UNIX-based systems, the

umask is set to 022, which allows only the user who created the file or directory to make

modifications. Under this scheme, all other users, including members of the creator's group, are not

allowed to make any modifications. However, under the UPG scheme, this “group protection” is not

necessary since every user has their own private group. See Section 3.3.4, “Setting Default

Permissions for New Files Using umask” for more information.

A list of all groups is stored in the /etc/group configuration file.

3.1.2. Shadow Passwords

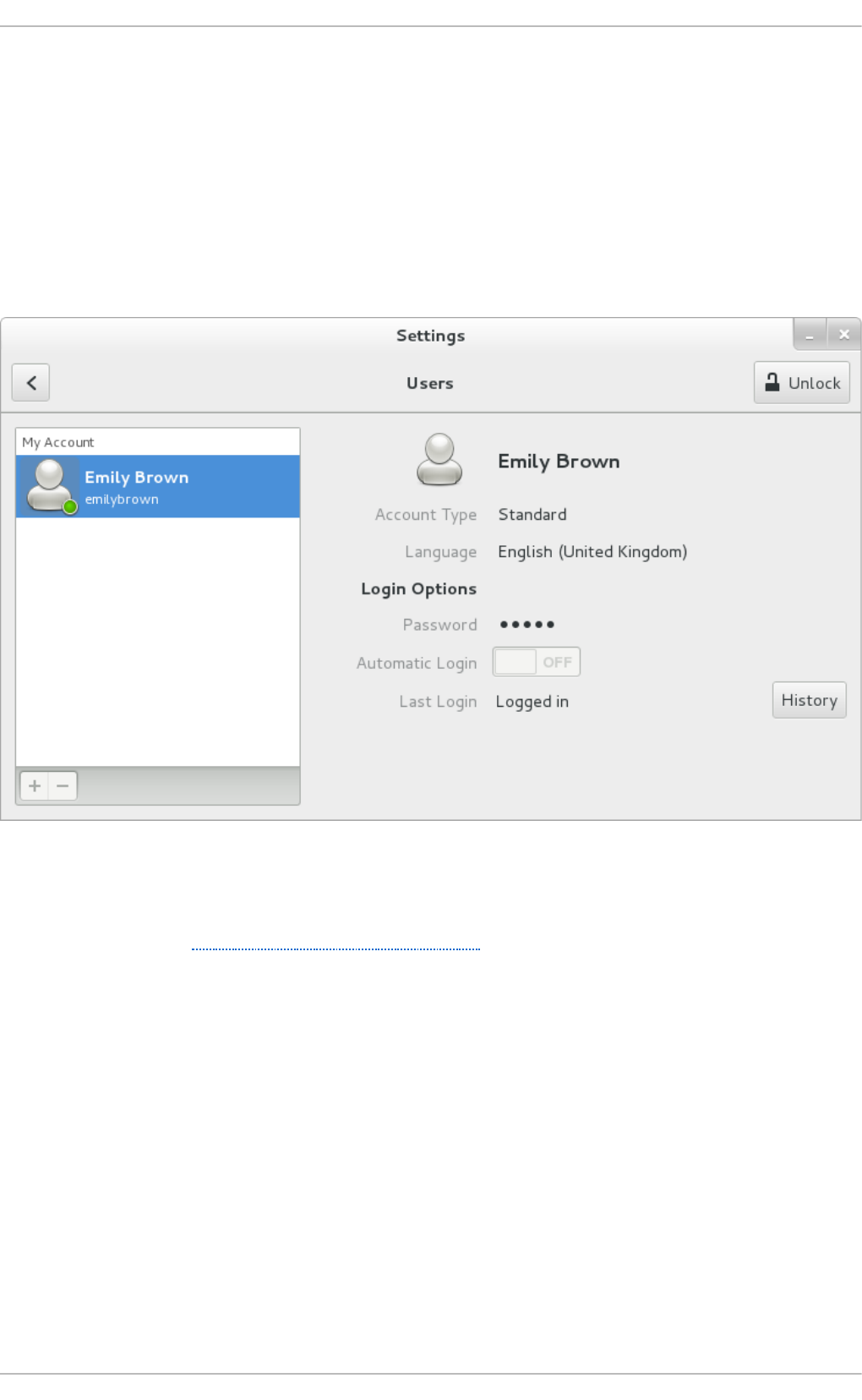

In environments with multiple users, it is very important to use shadow passwords provided by the