Virtualization Deployment And Administration Guide Red Hat Enterprise Linux 7 En US

Red_Hat_Enterprise_Linux-7-Virtualization_Deployment_and_Administration_Guide-en-US

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 582 [warning: Documents this large are best viewed by clicking the View PDF Link!]

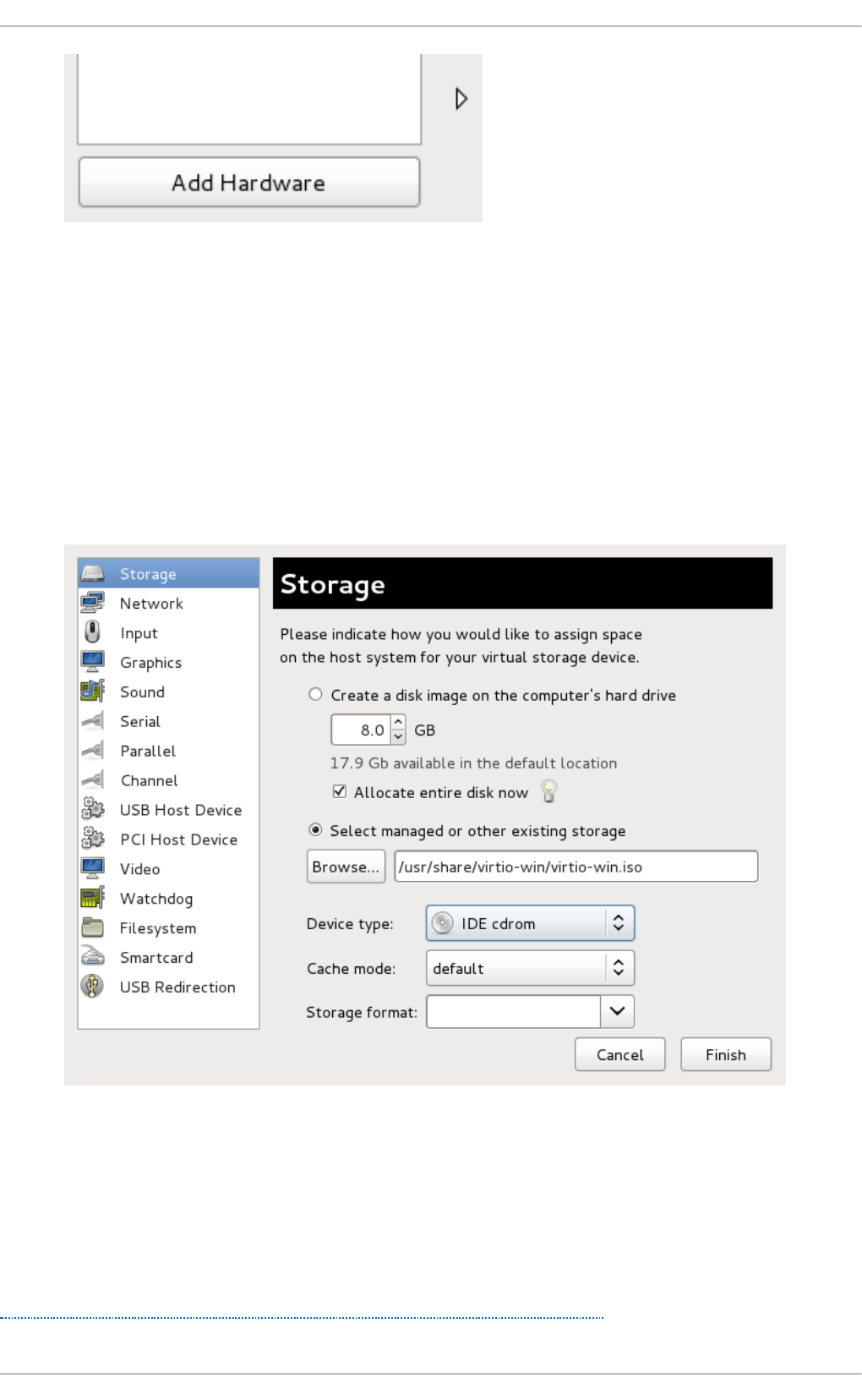

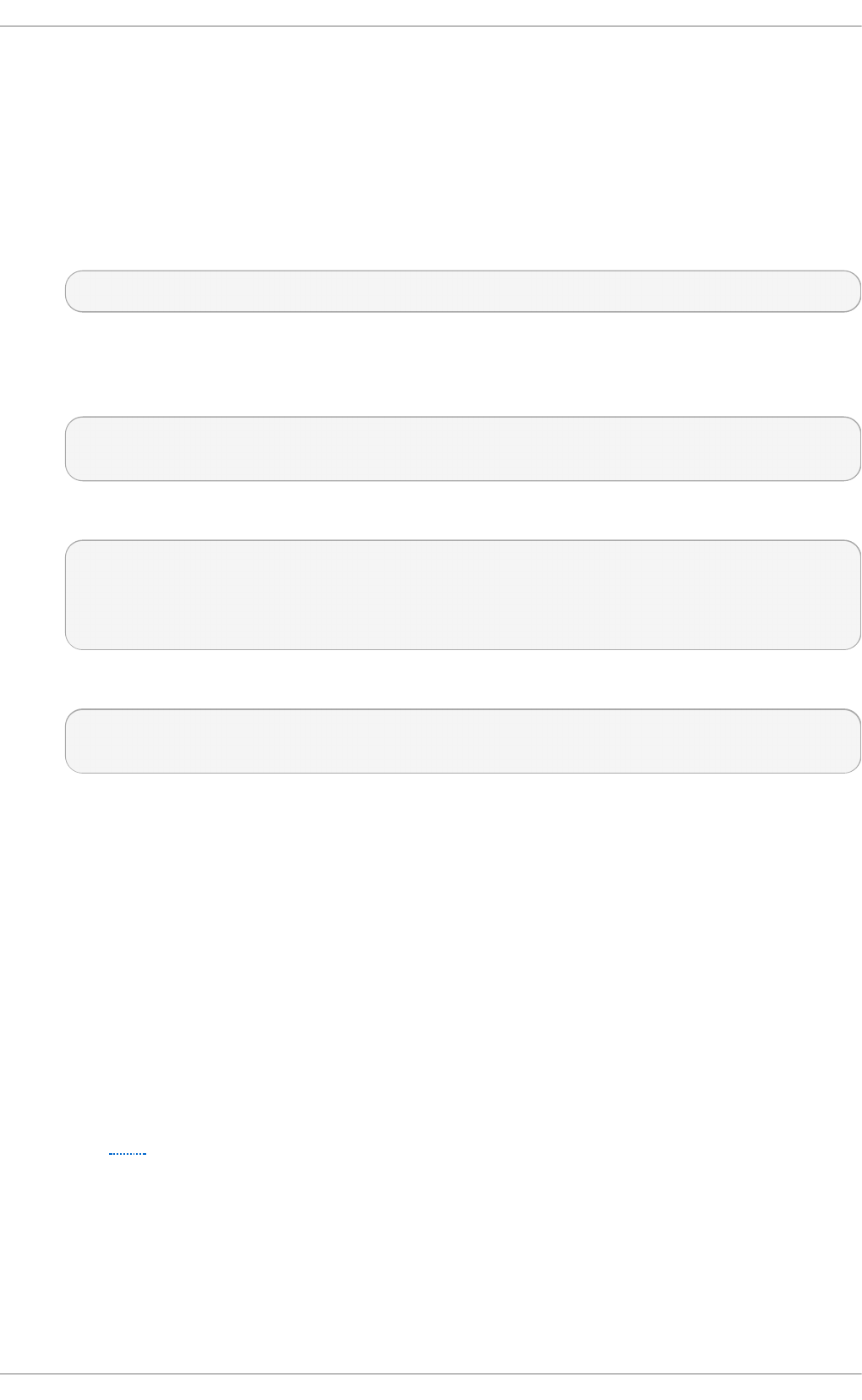

- Table of Contents

- Part I. Deployment

- Chapter 1. System requirements

- Chapter 2. Installing the virtualization packages

- Chapter 3. Installing a virtual machine

- Chapter 4. Virtualizing Red Hat Enterprise Linux on Other Platforms

- Chapter 5. Installing a fully-virtualized Windows guest

- Chapter 6. KVM Para-virtualized (virtio) Drivers

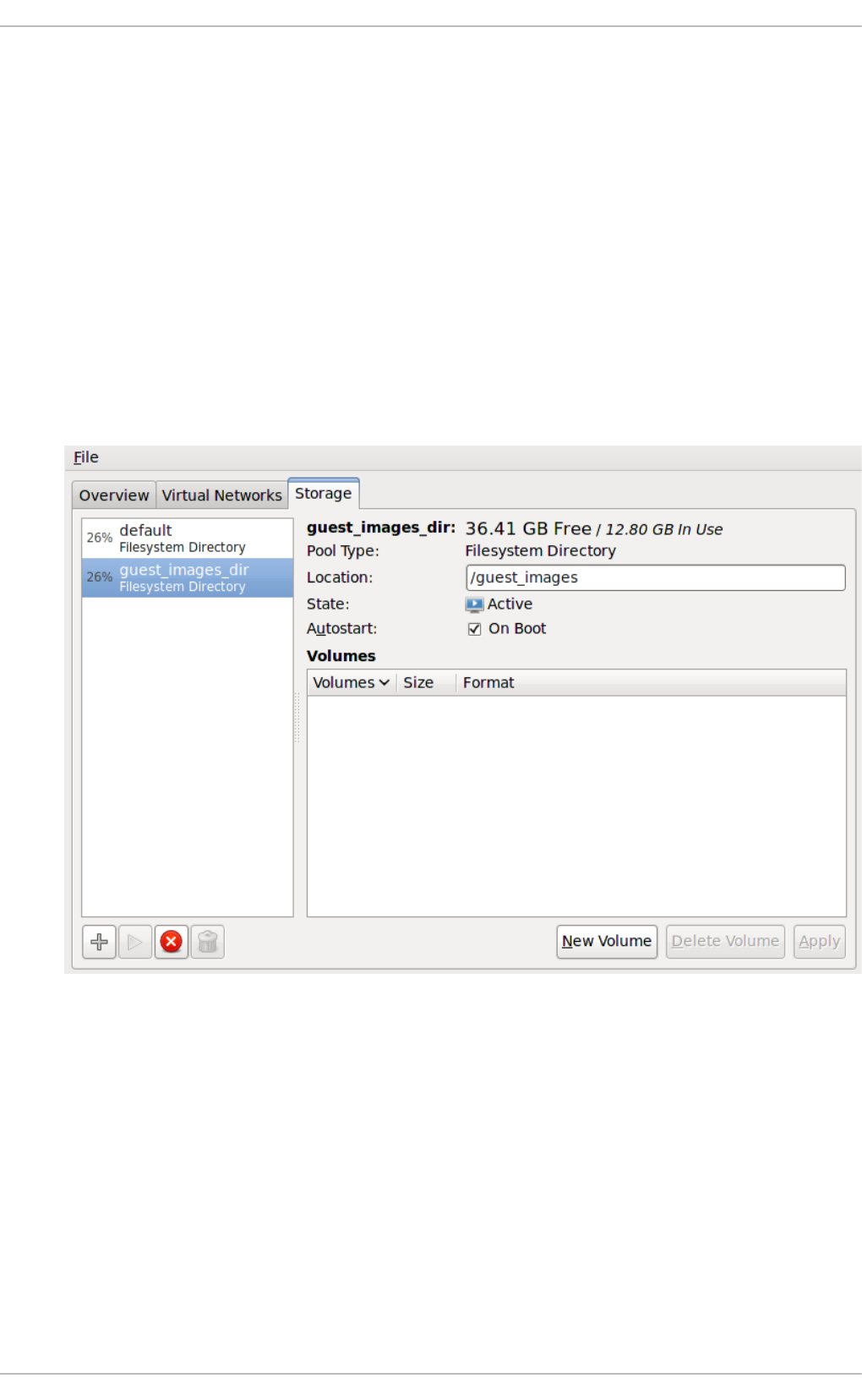

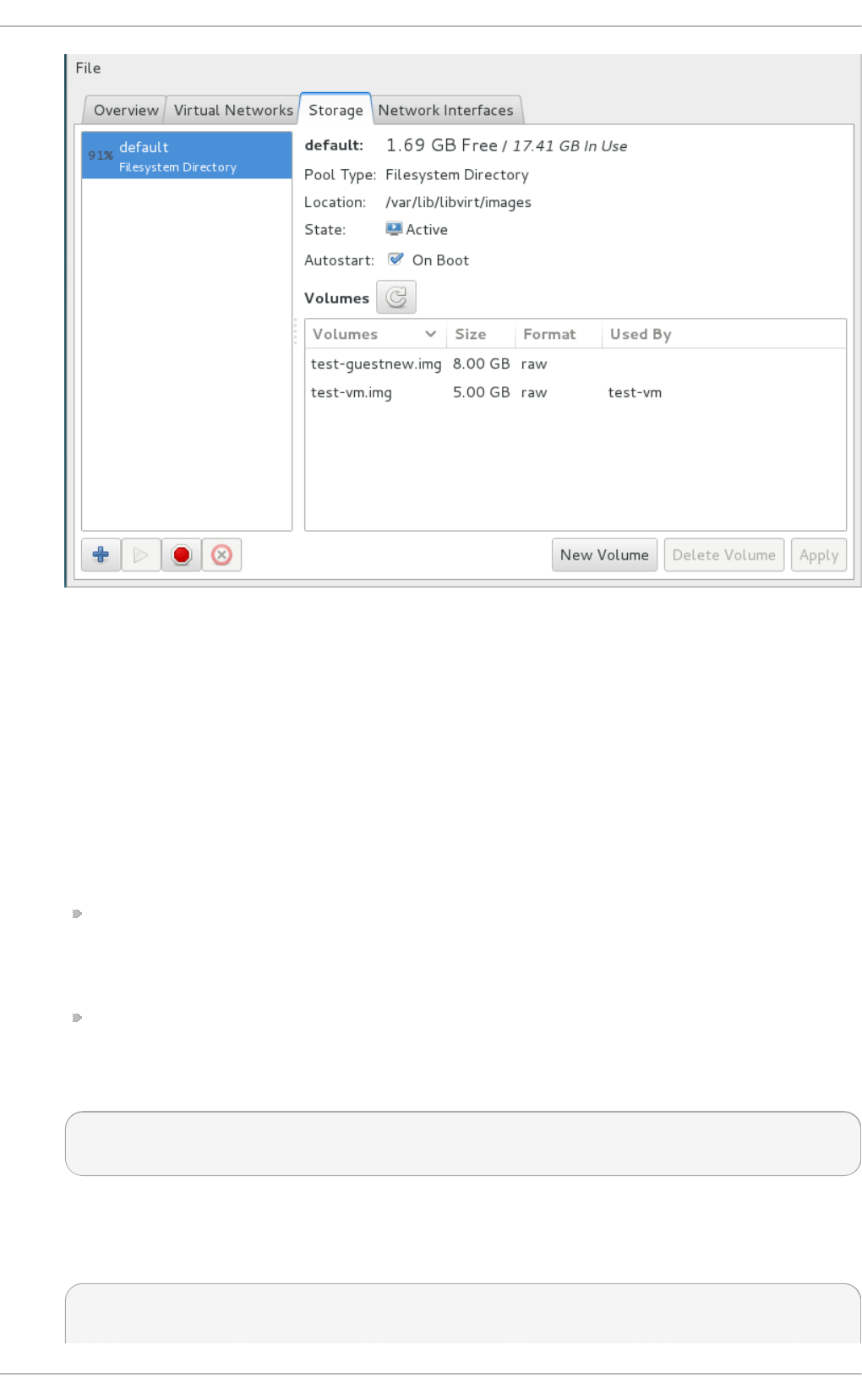

- Chapter 7. Network configuration

- Chapter 8. Overcommitting with KVM

- Chapter 9. KVM guest timing management

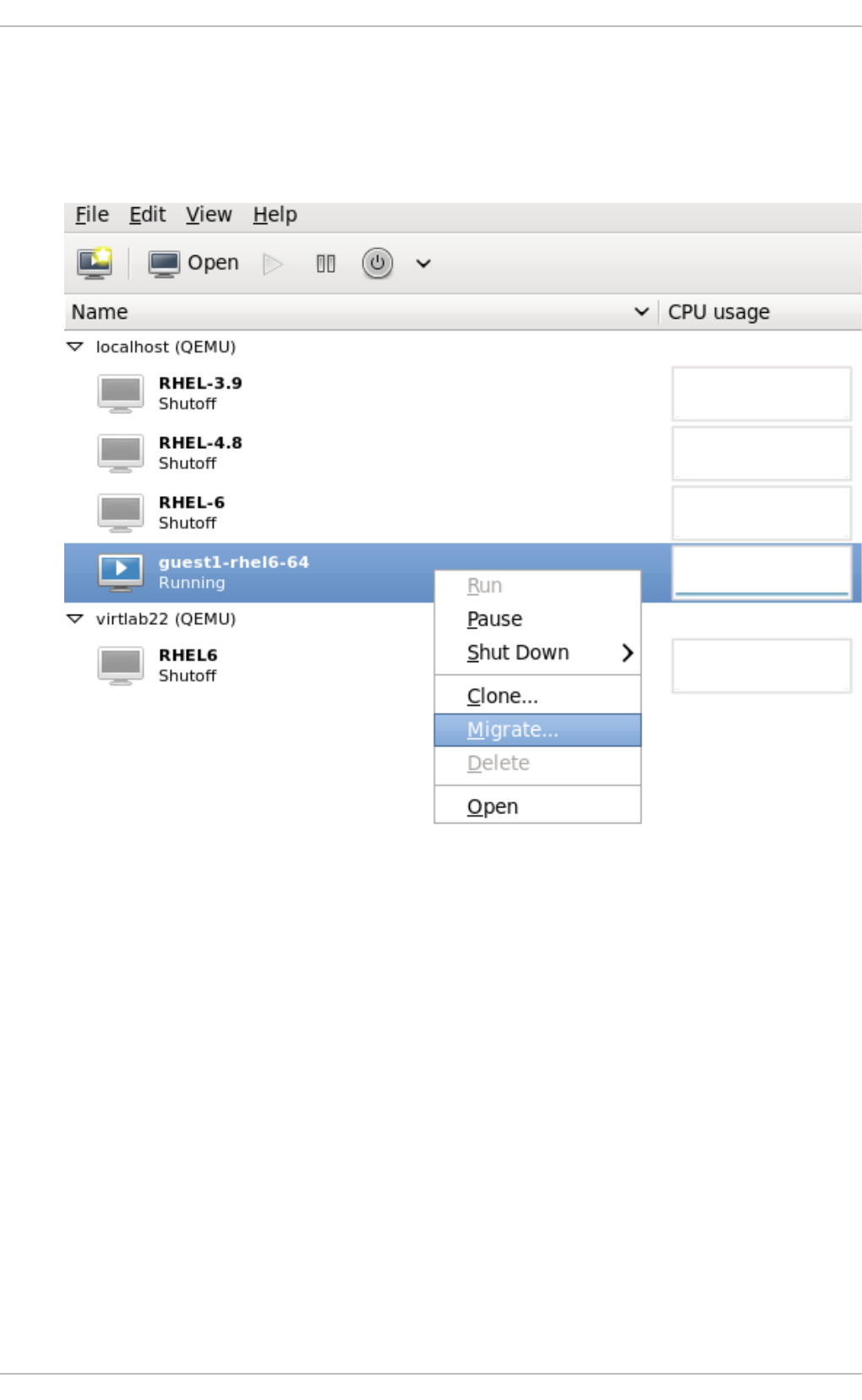

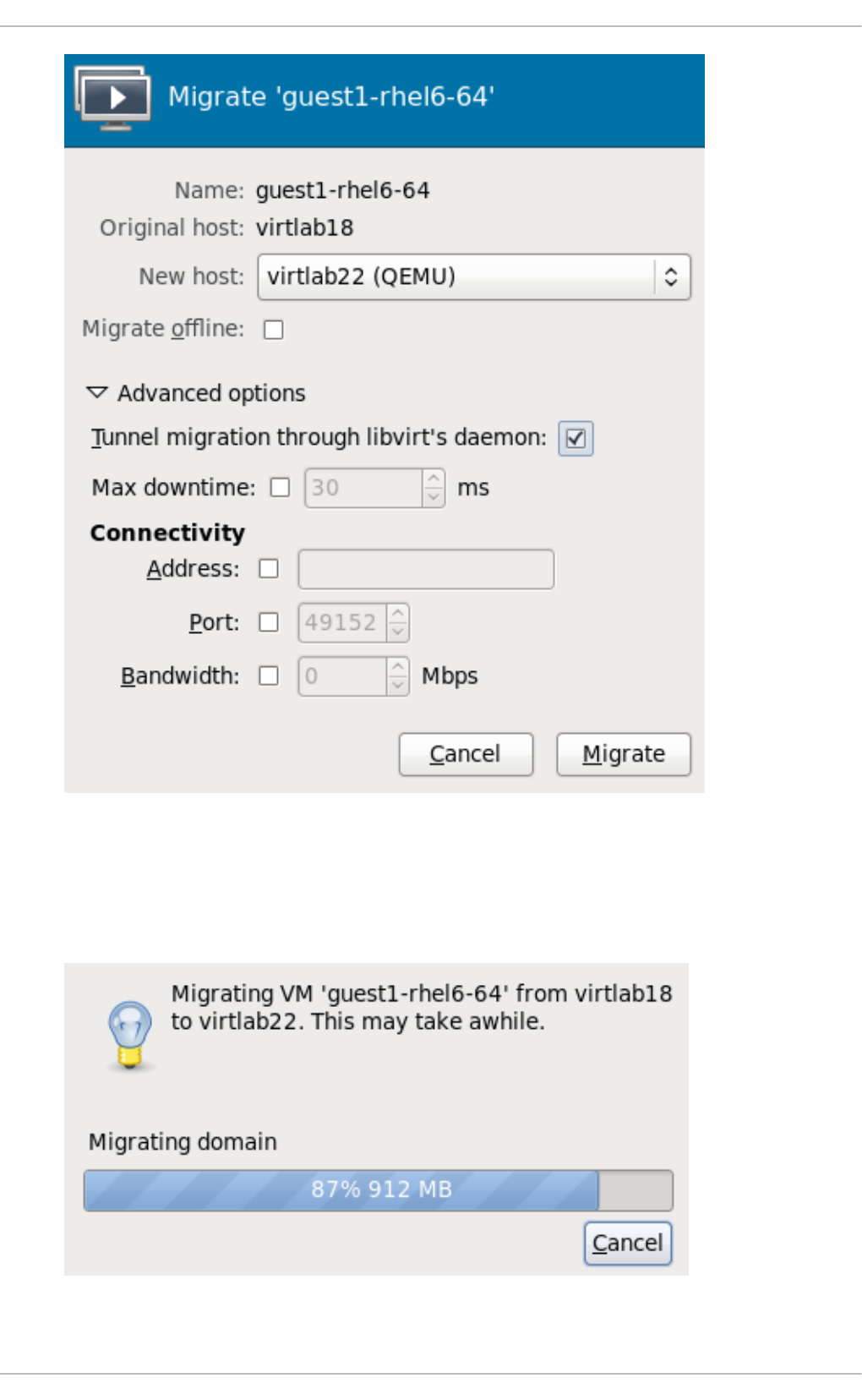

- Chapter 10. Network booting with libvirt

- Chapter 11. Enhancing virtualization with the QEMU guest agent and SPICE agent

- Chapter 12. Nested Virtualization

- Part II. Administration

- Chapter 13. Securing the host physical machine and improving performance

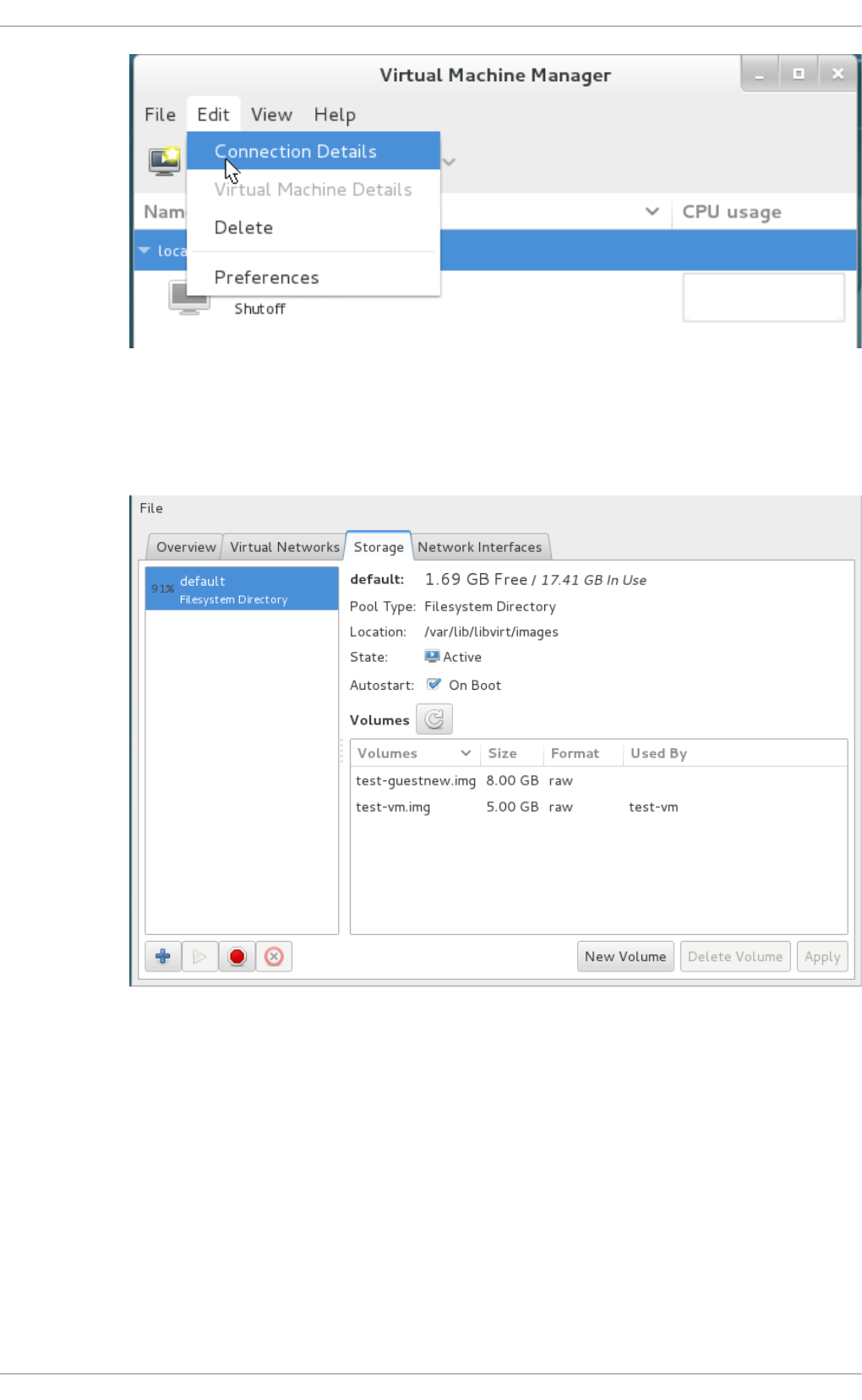

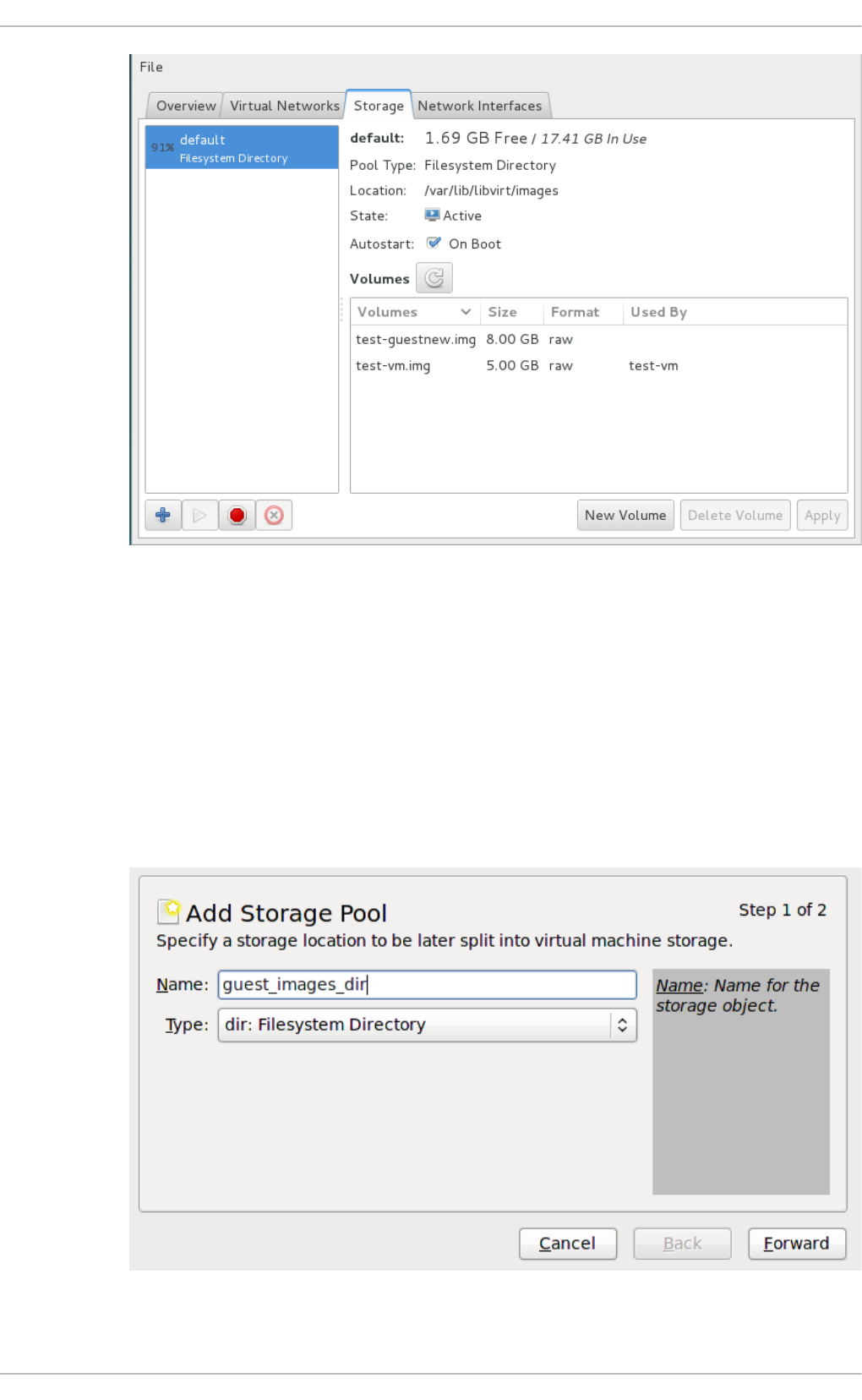

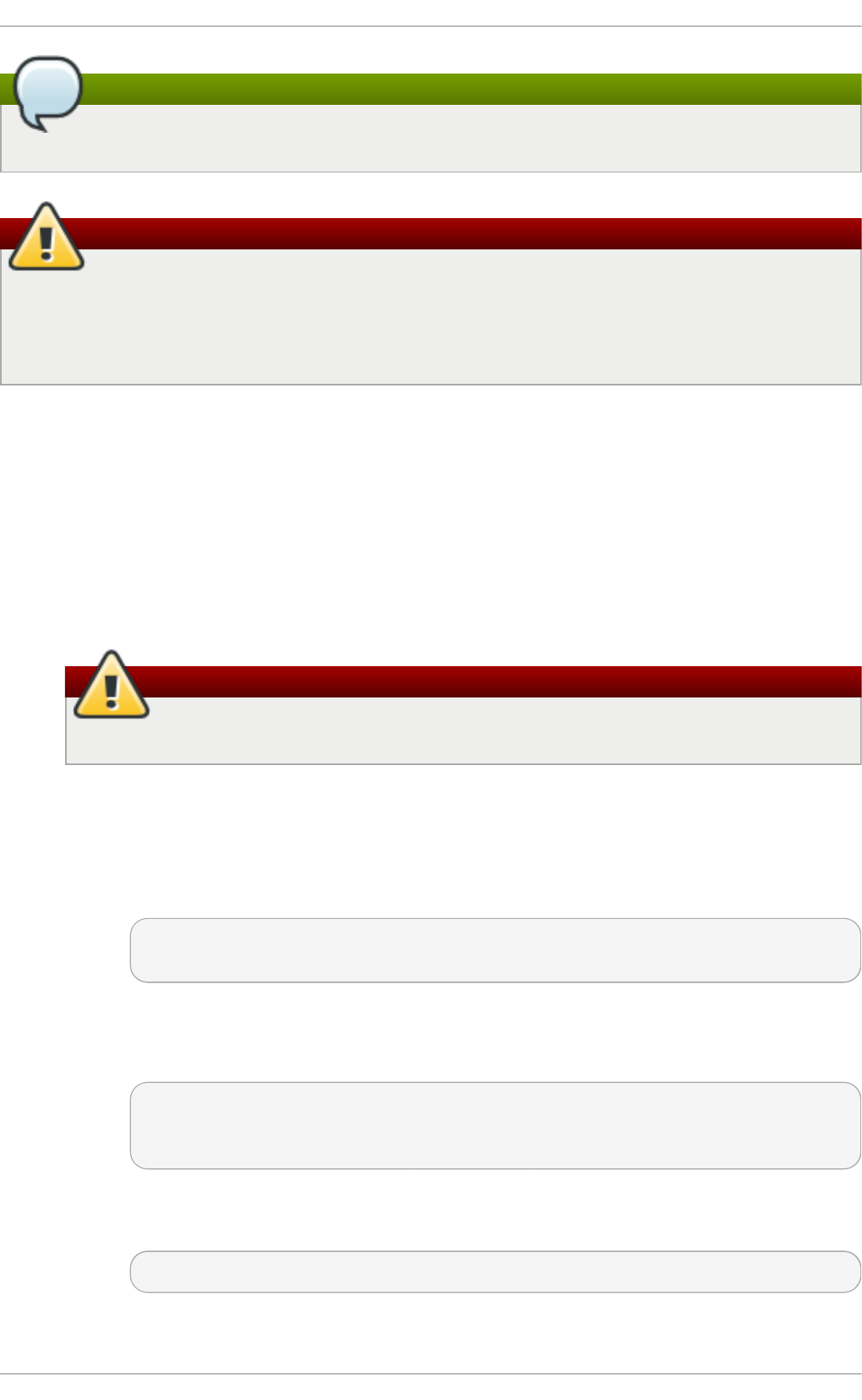

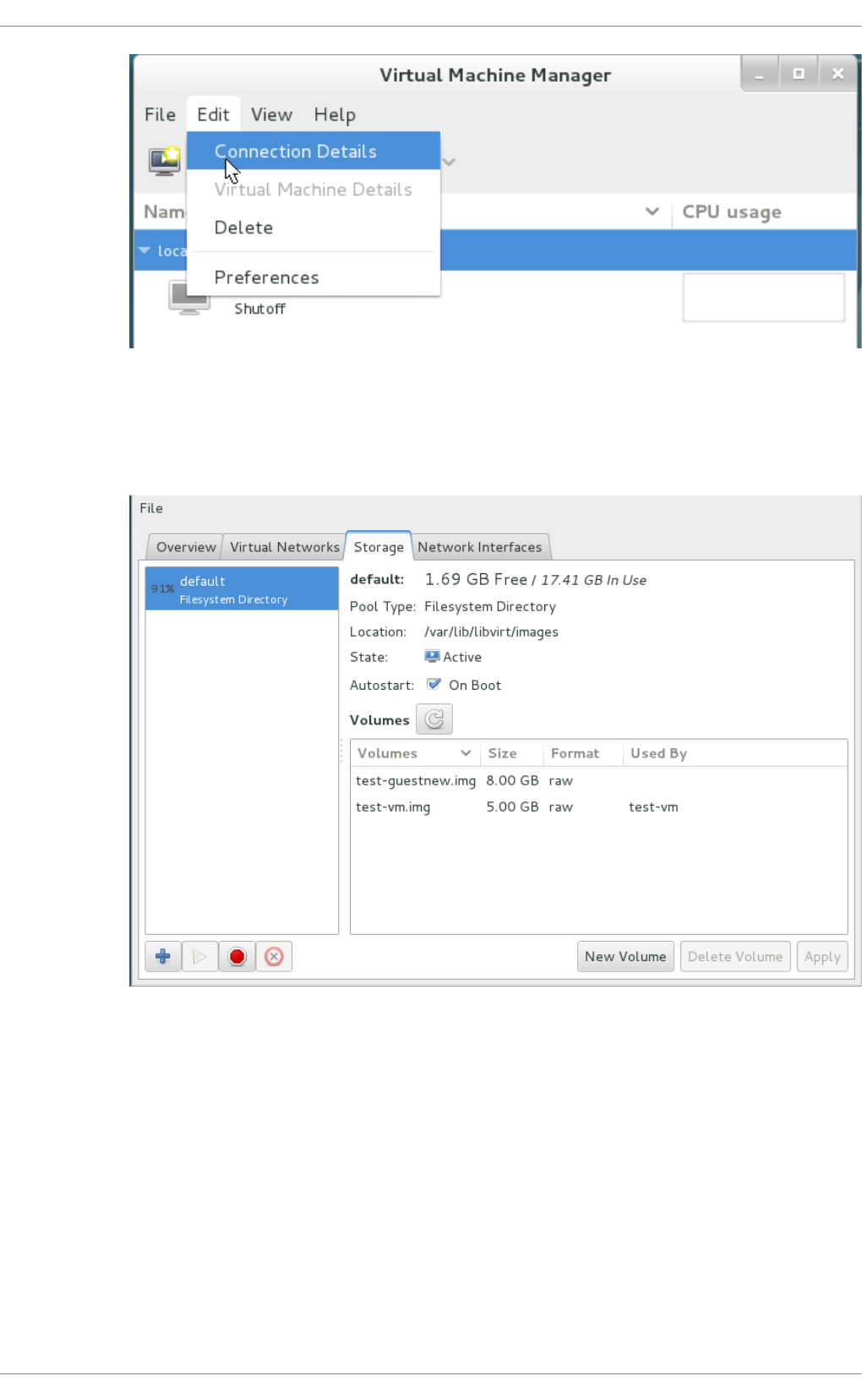

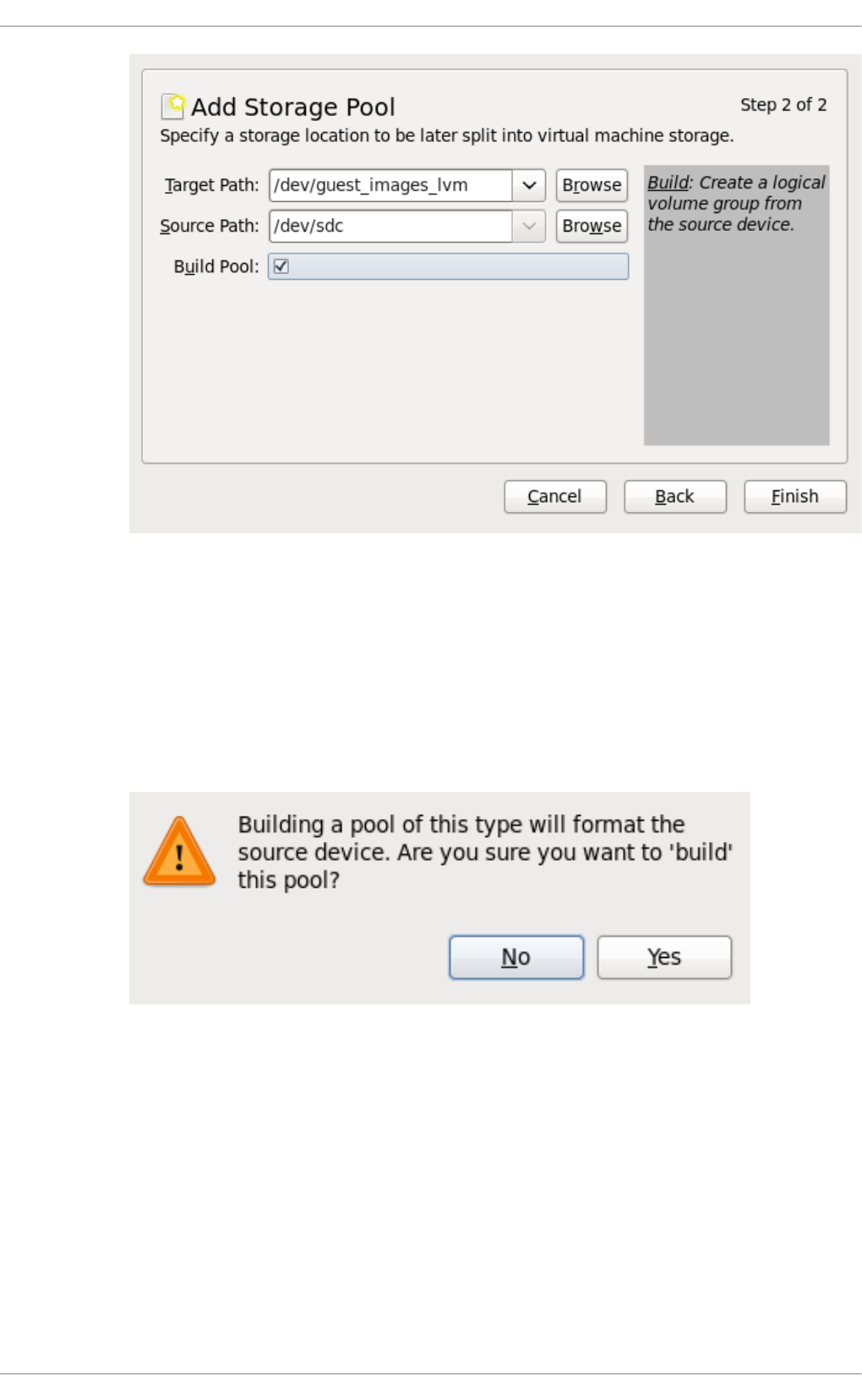

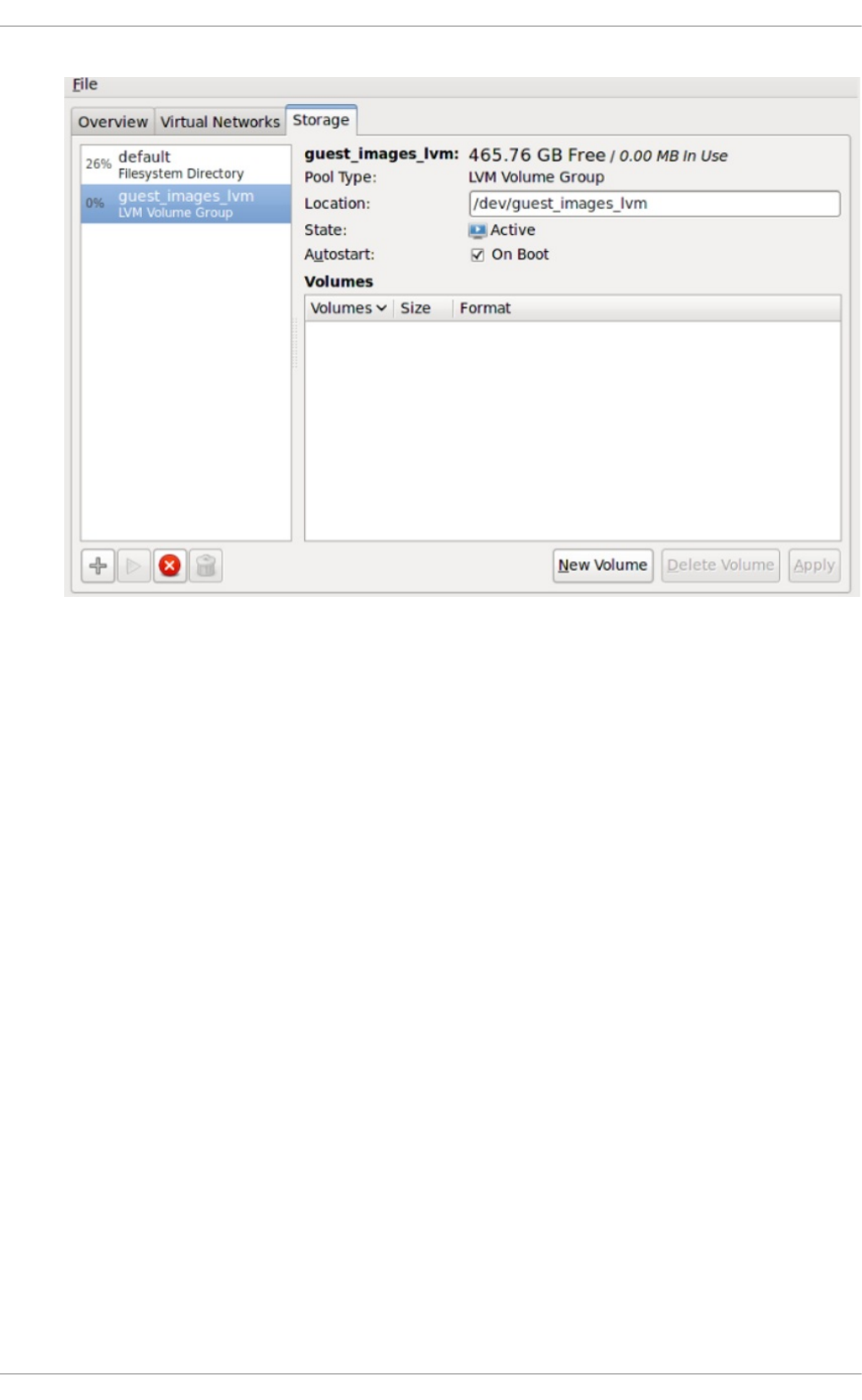

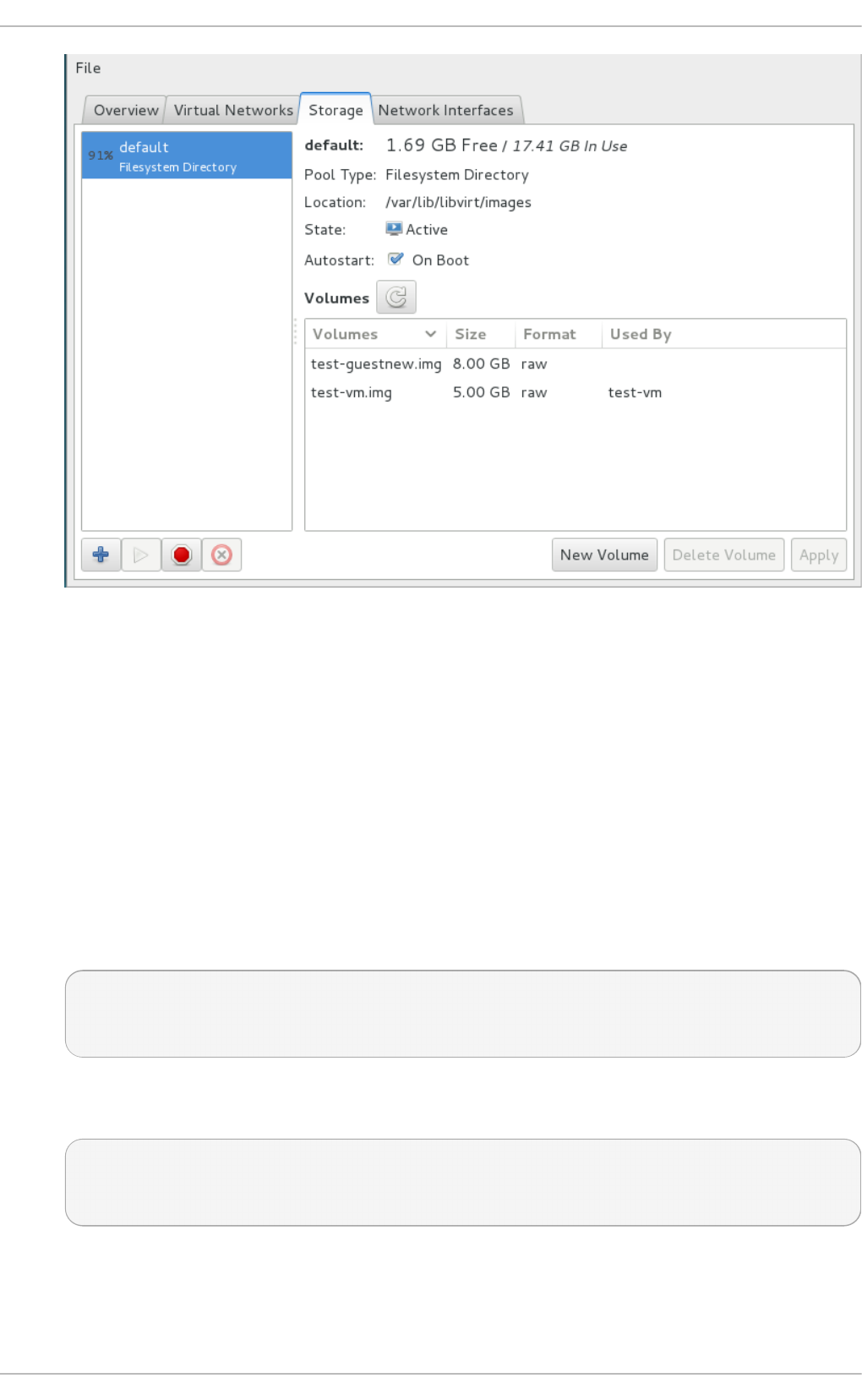

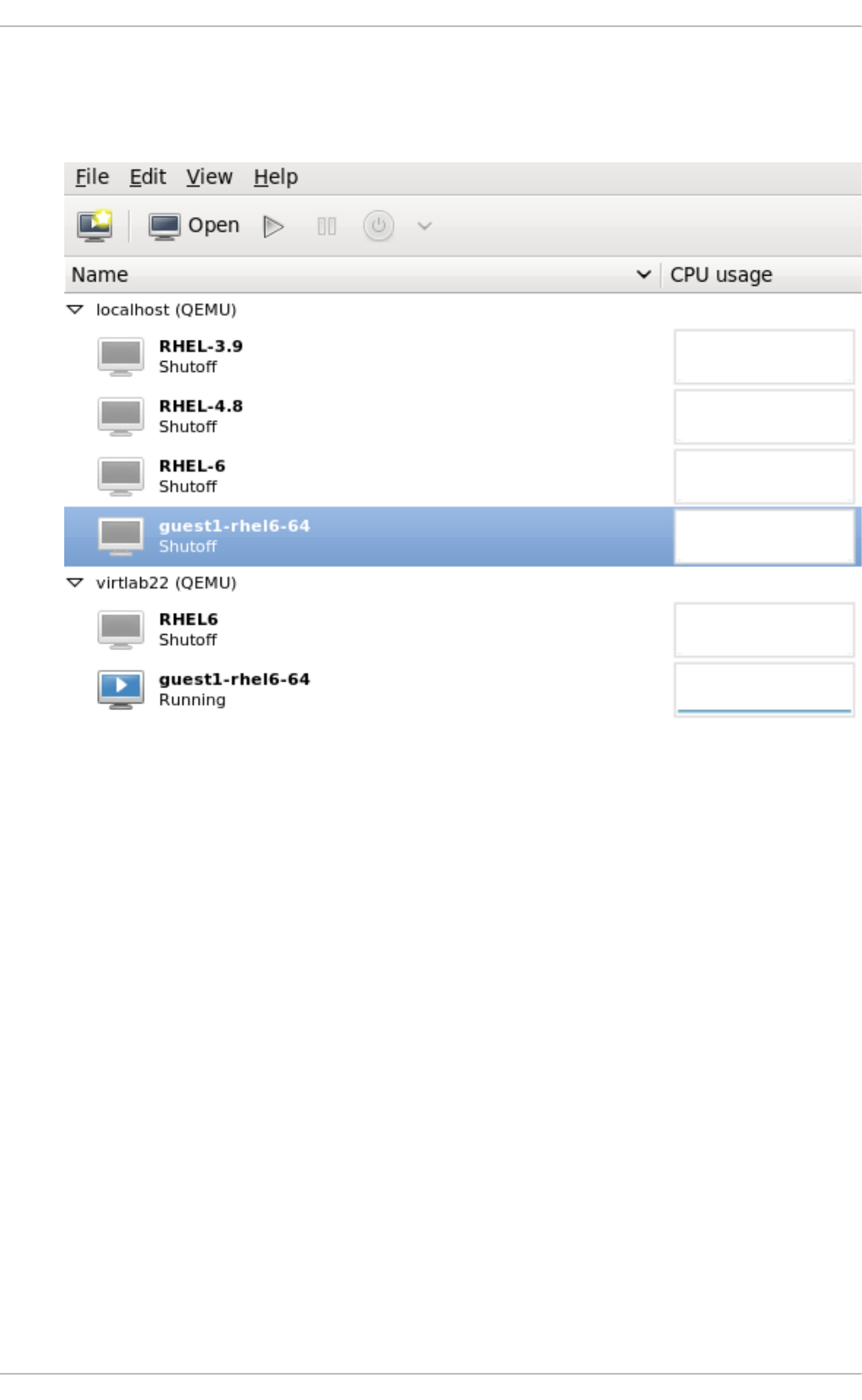

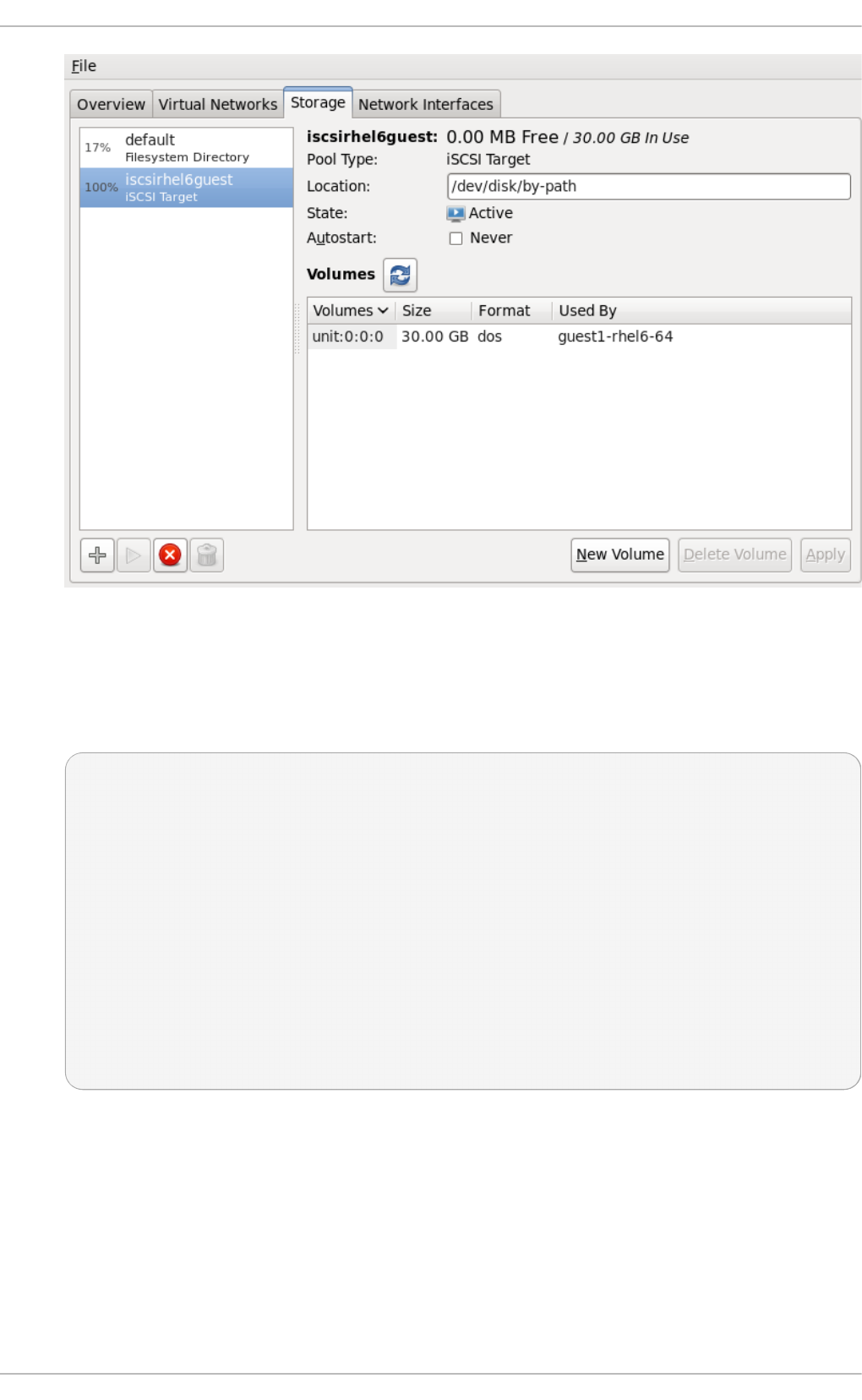

- Chapter 14. Storage pools

- Chapter 15. Storage Volumes

- Chapter 16. Using qemu-img

- 16.1. Checking the disk image

- 16.2. Committing changes to an image

- 16.3. Converting an existing image to another format

- 16.4. Creating and formatting new images or devices

- 16.5. Displaying image information

- 16.6. Re-basing a backing file of an image

- 16.7. Re-sizing the disk image

- 16.8. Listing, creating, applying, and deleting a snapshot

- 16.9. Supported qemu-img formats

- Chapter 17. KVM live migration

- Chapter 18. Guest virtual machine device configuration

- 18.1. PCI devices

- 18.1.1. Assigning a PCI device with virsh

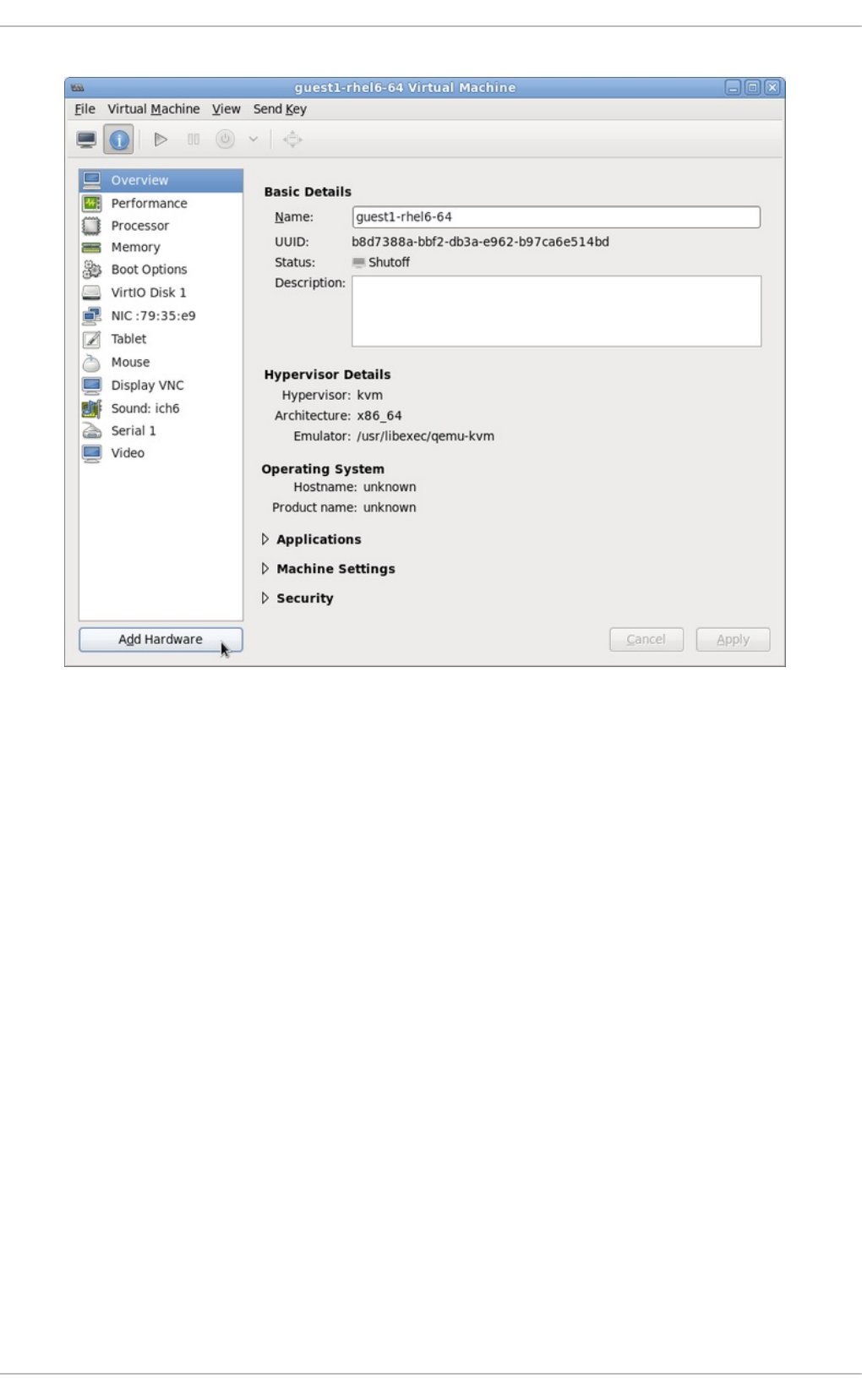

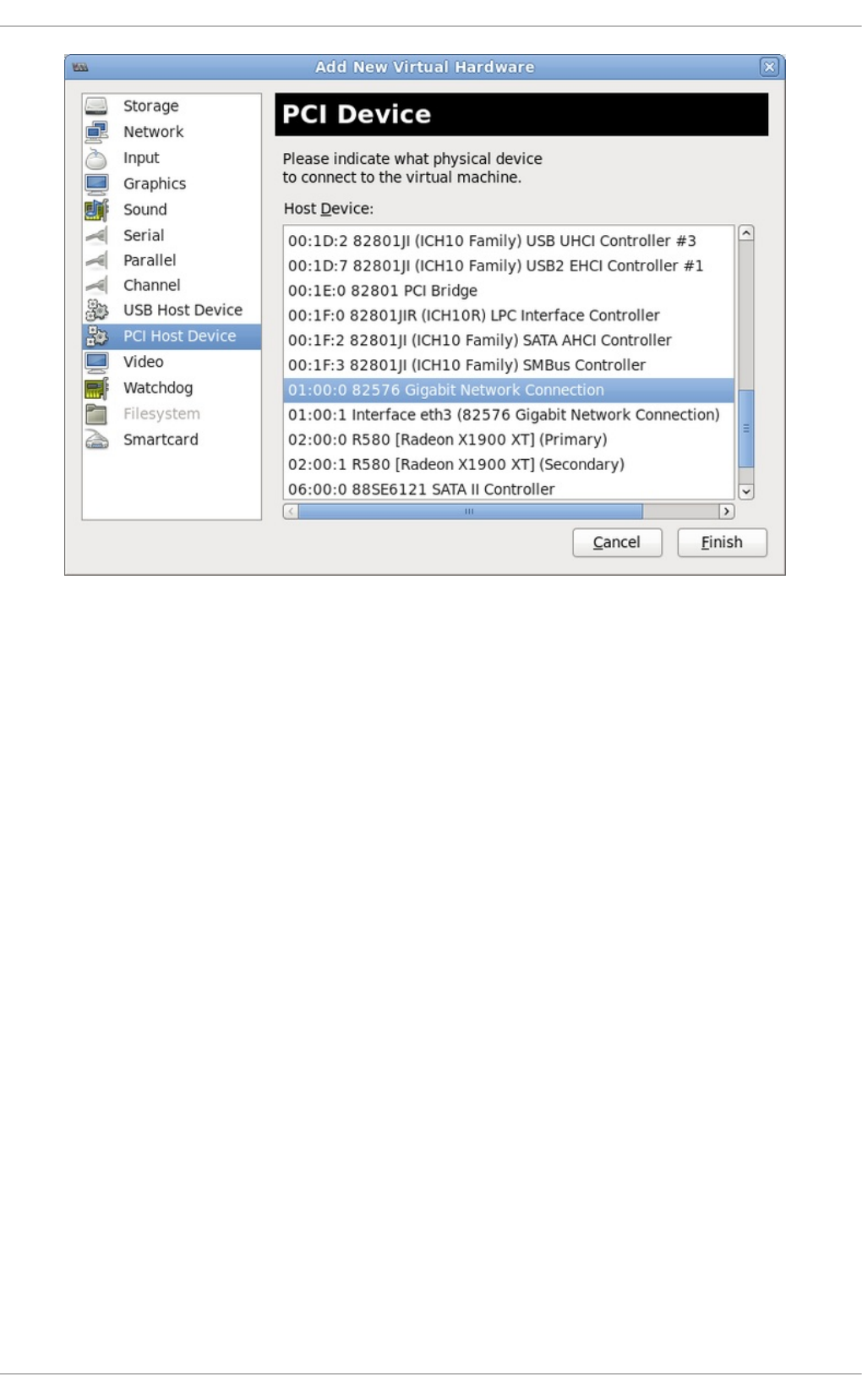

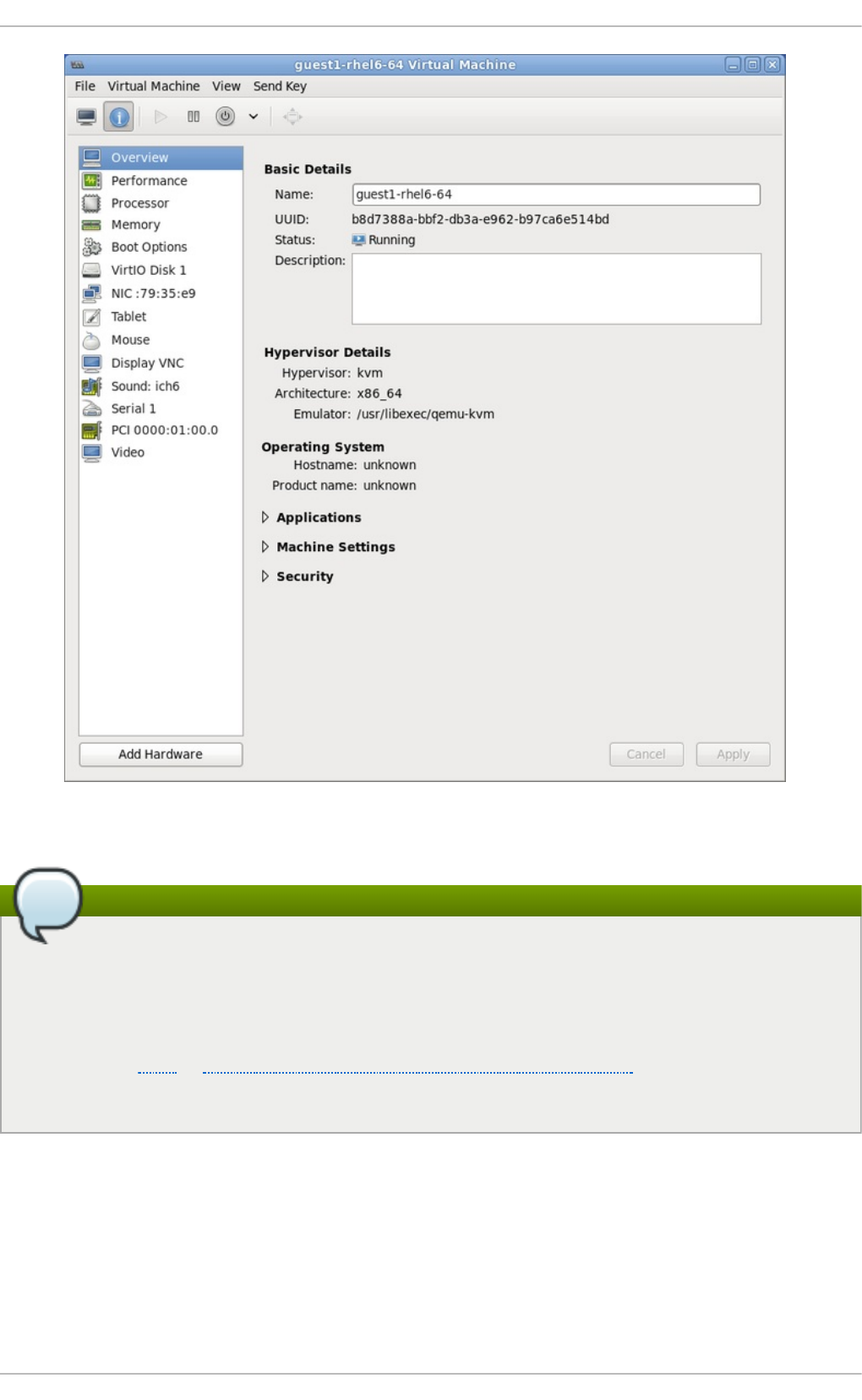

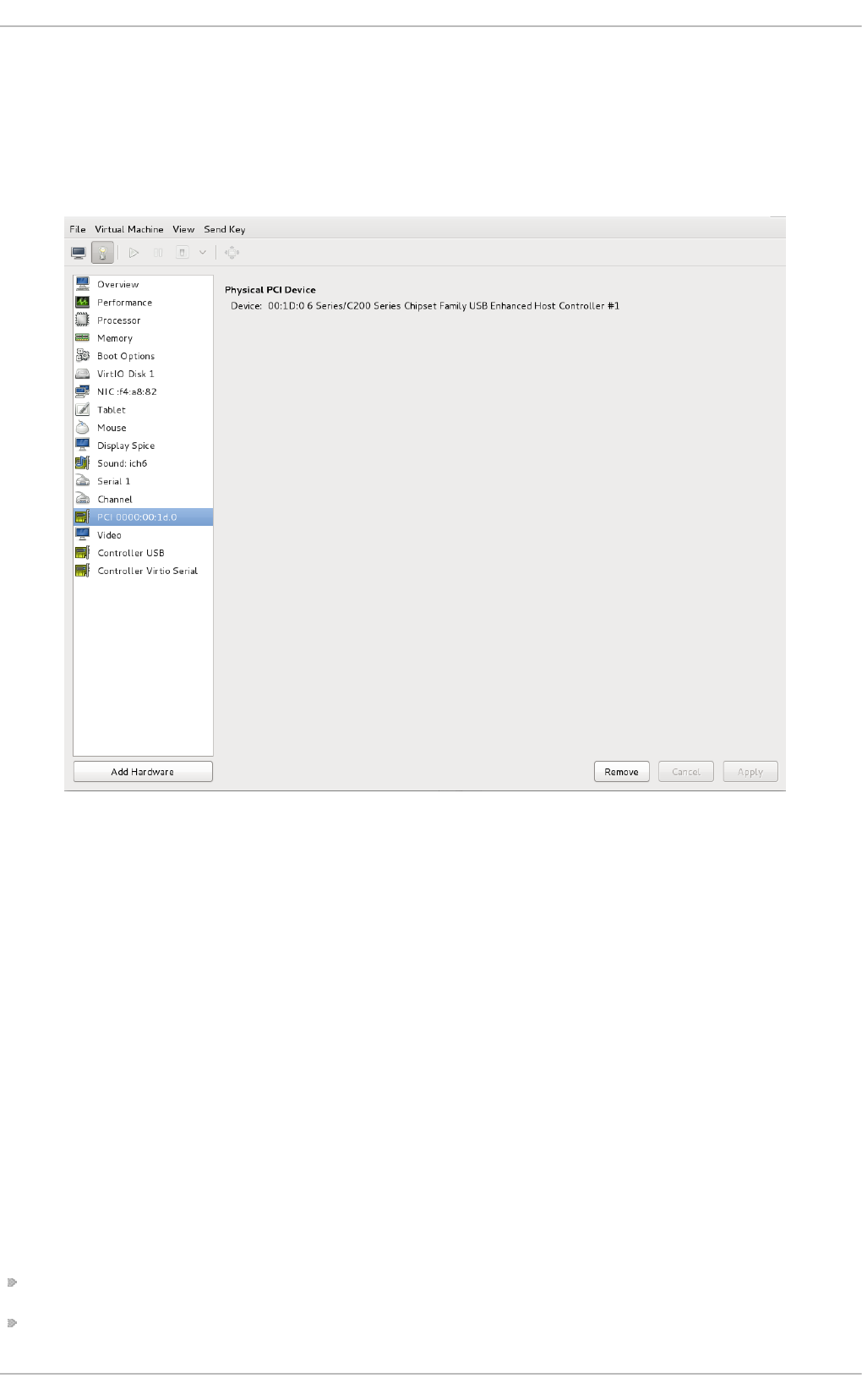

- 18.1.2. Assigning a PCI device with virt-manager

- 18.1.3. PCI device assignment with virt-install

- 18.1.4. Detaching an assigned PCI device

- 18.1.5. Creating PCI bridges

- 18.1.6. PCI passthrough

- 18.1.7. Configuring PCI assignment (passthrough) with SR-IOV devices

- 18.1.8. Setting PCI device assignment from a pool of SR-IOV virtual functions

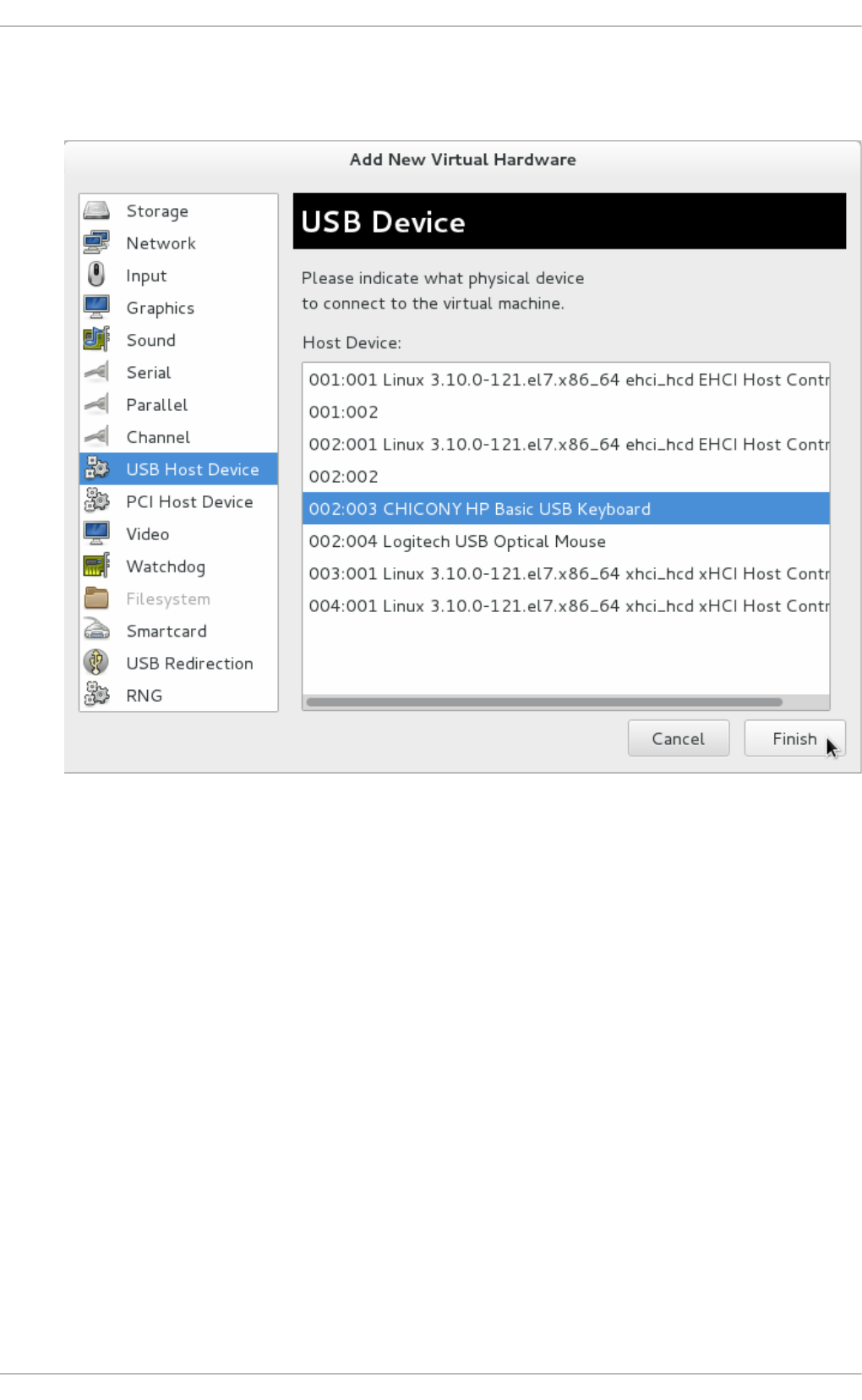

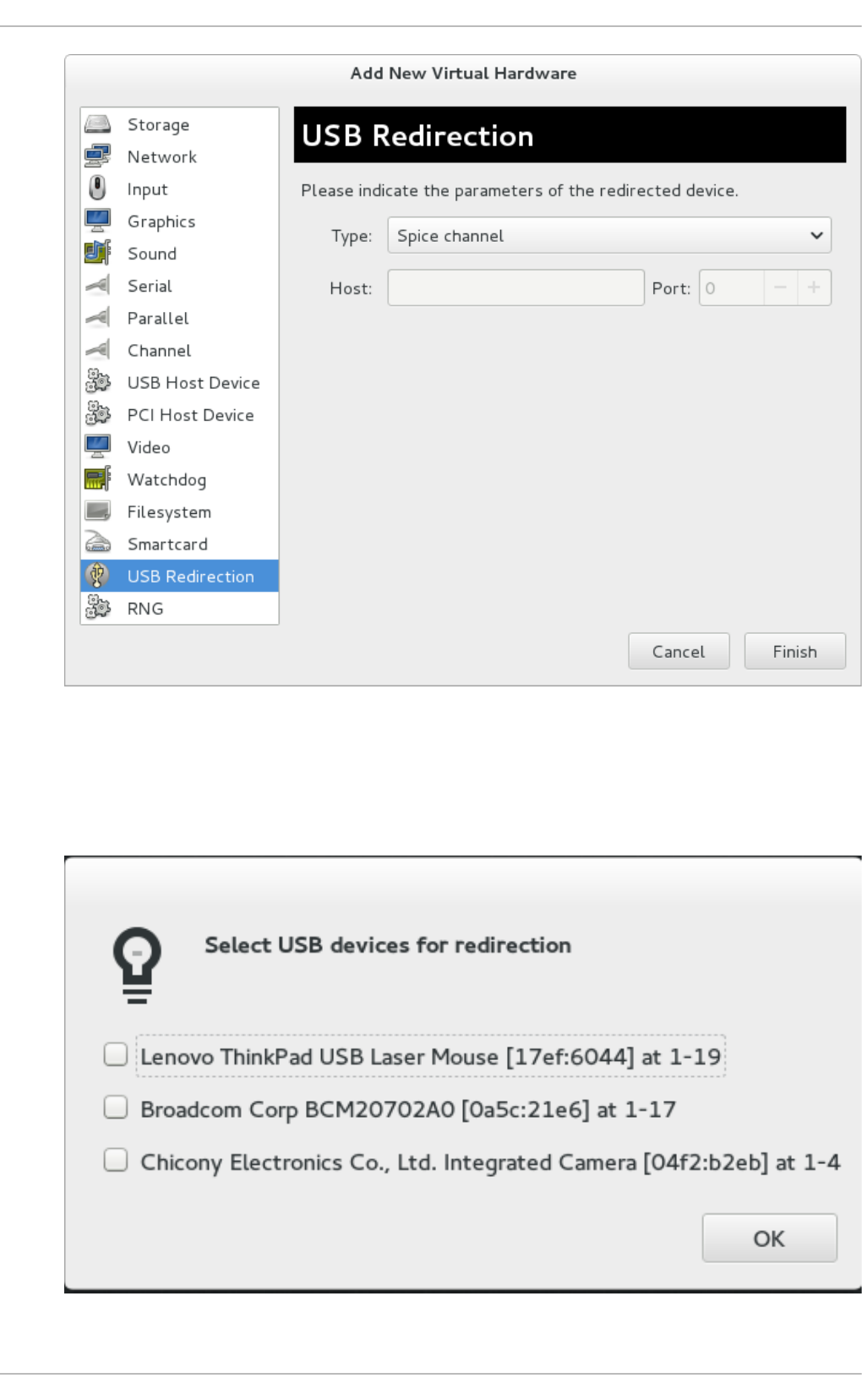

- 18.2. USB devices

- 18.3. Configuring device controllers

- 18.4. Setting addresses for devices

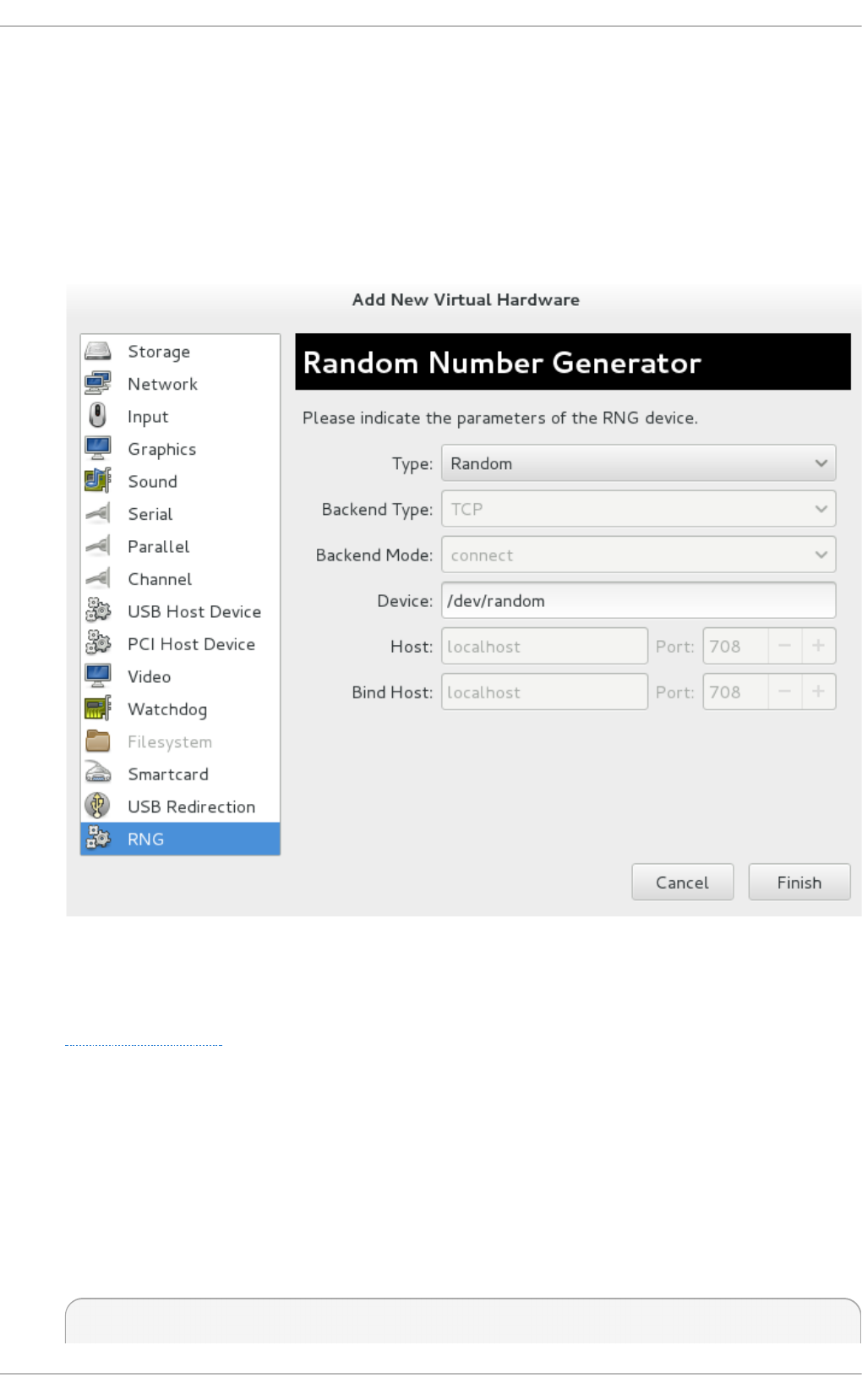

- 18.5. Random number generator device

- 18.6. Assigning GPU devices

- 18.1. PCI devices

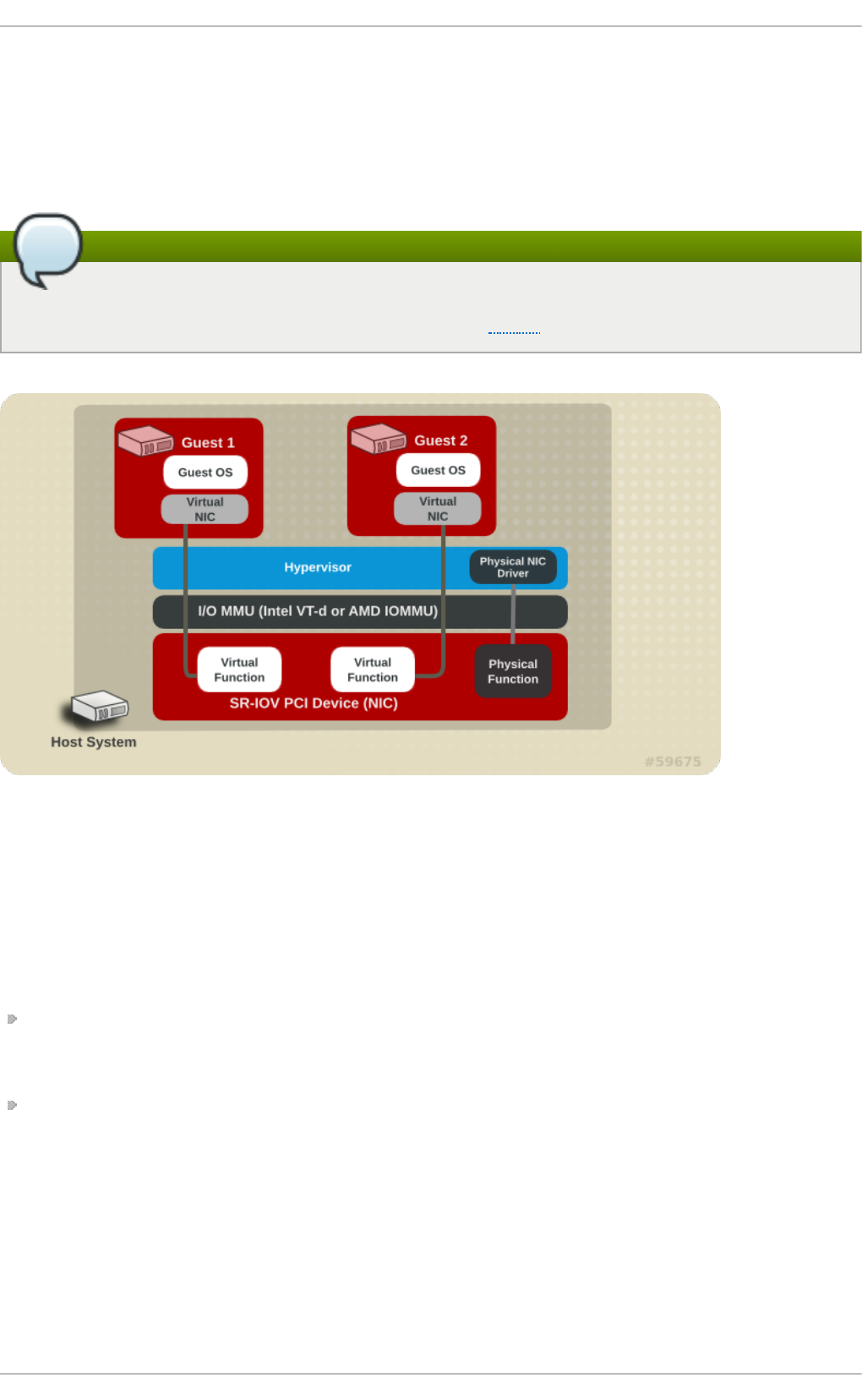

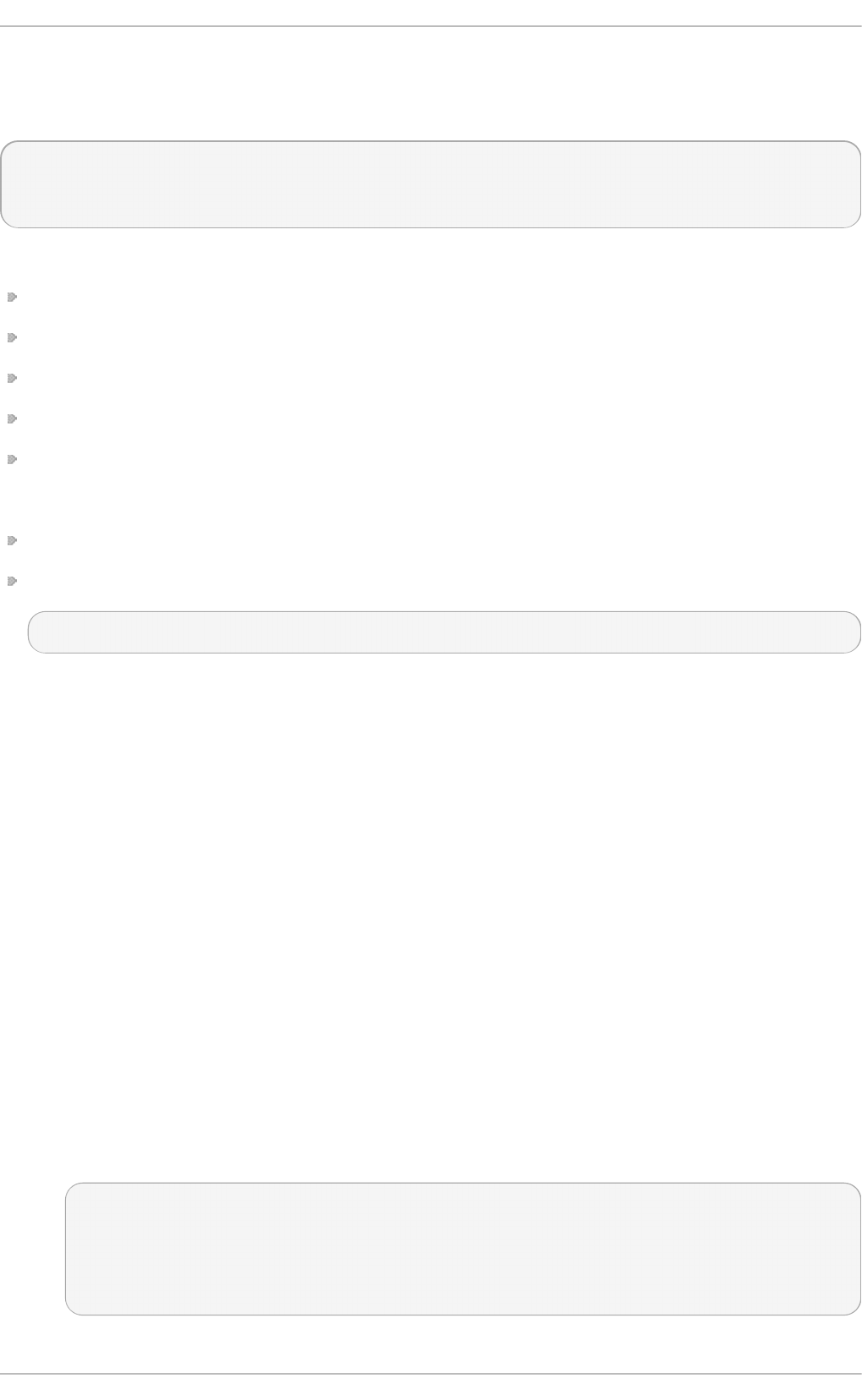

- Chapter 19. SR-IOV

- Chapter 20. Virtual Networking

- 20.1. Virtual network switches

- 20.2. Bridge Mode

- 20.3. Network Address Translation

- 20.4. DNS and DHCP

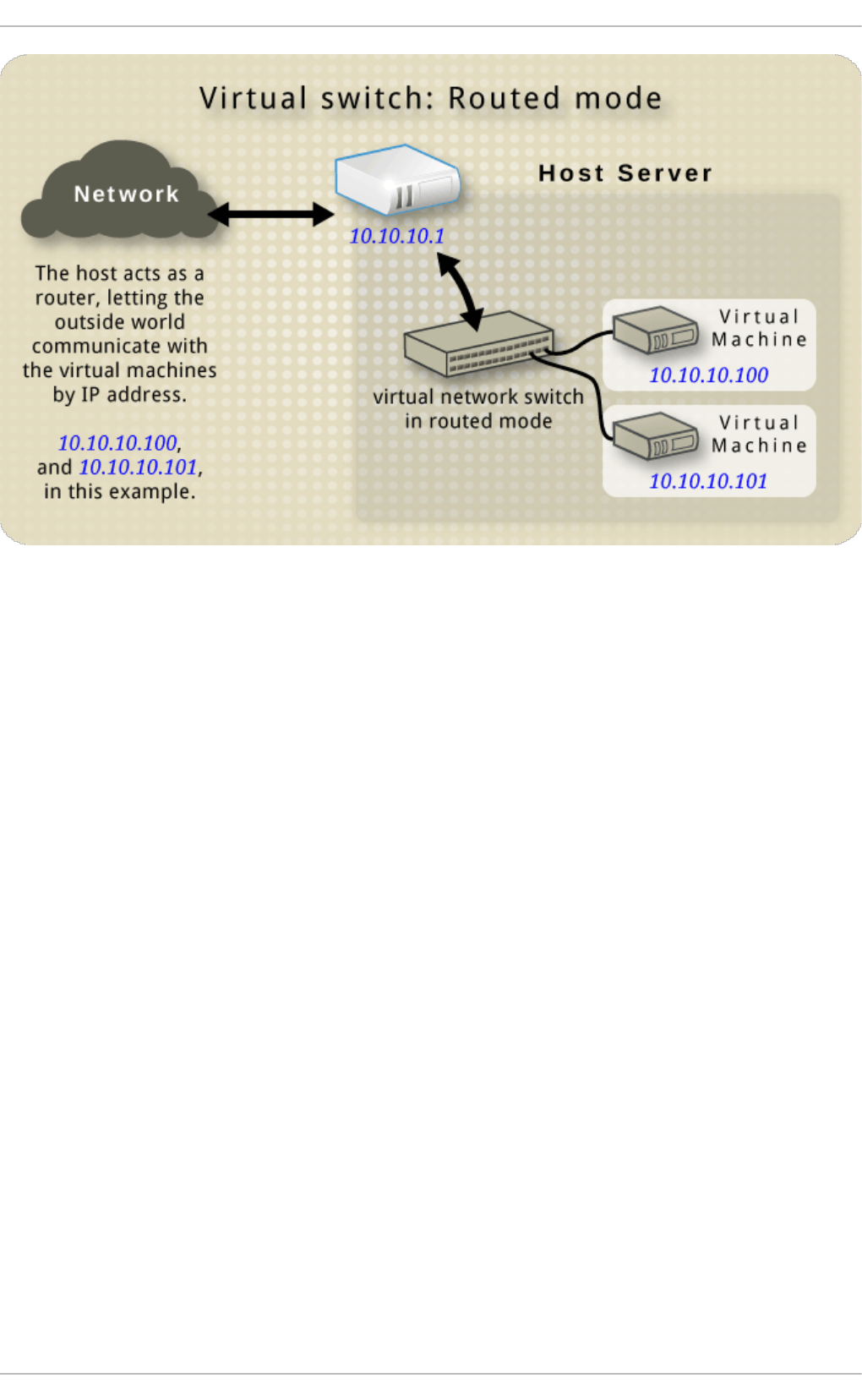

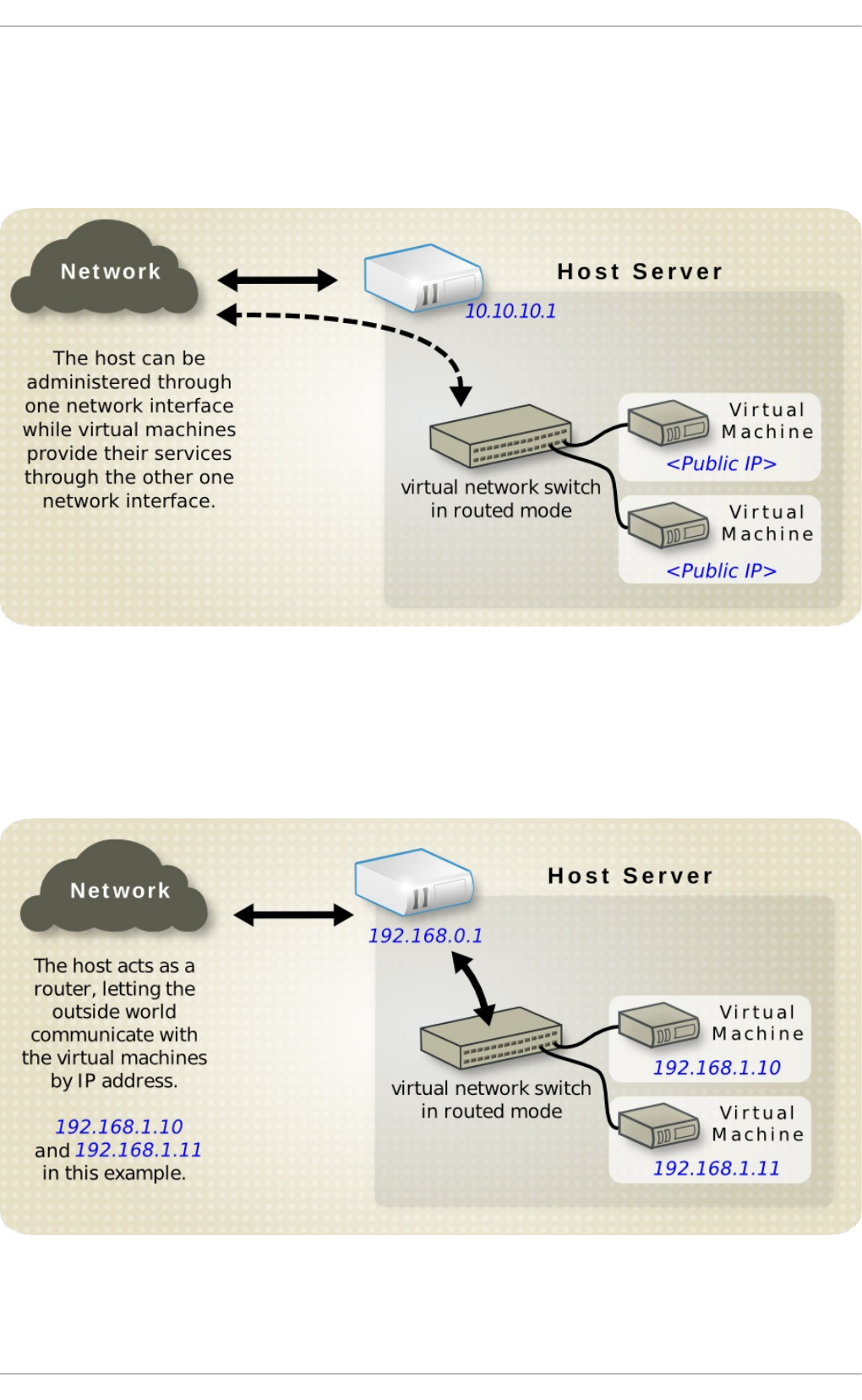

- 20.5. Routed mode

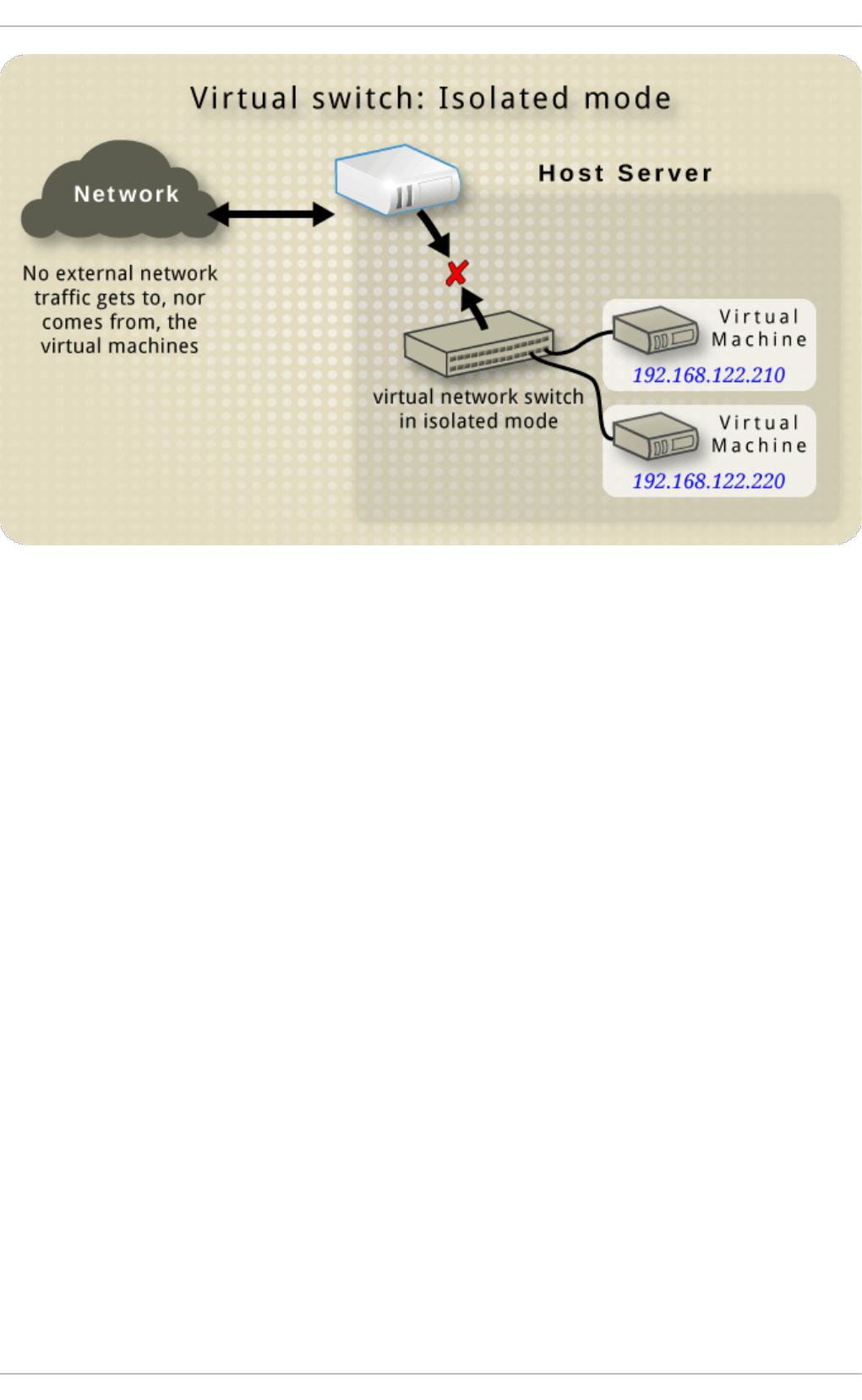

- 20.6. Isolated mode

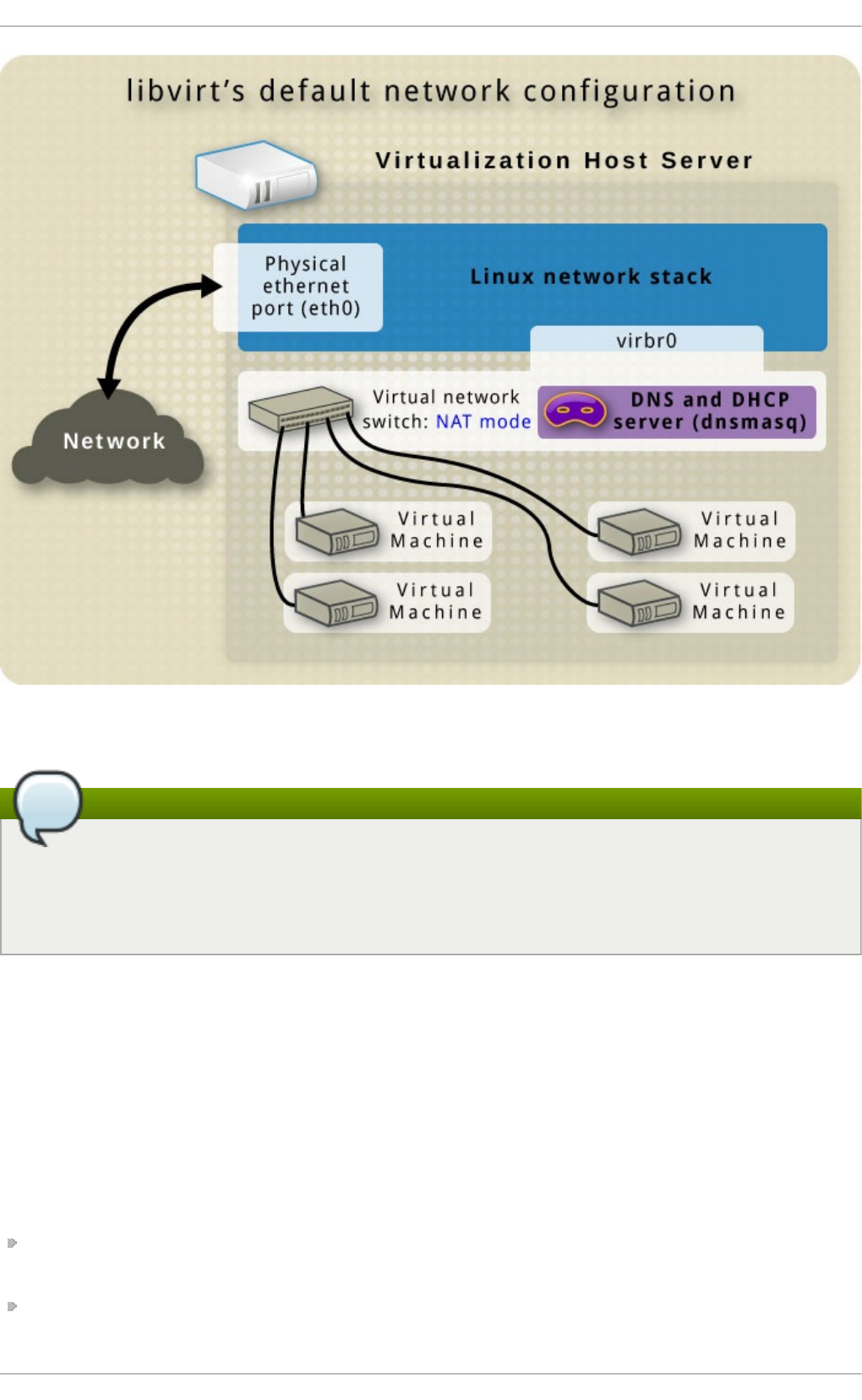

- 20.7. The default configuration

- 20.8. Examples of common scenarios

- 20.9. Managing a virtual network

- 20.10. Creating a virtual network

- 20.11. Attaching a virtual network to a guest

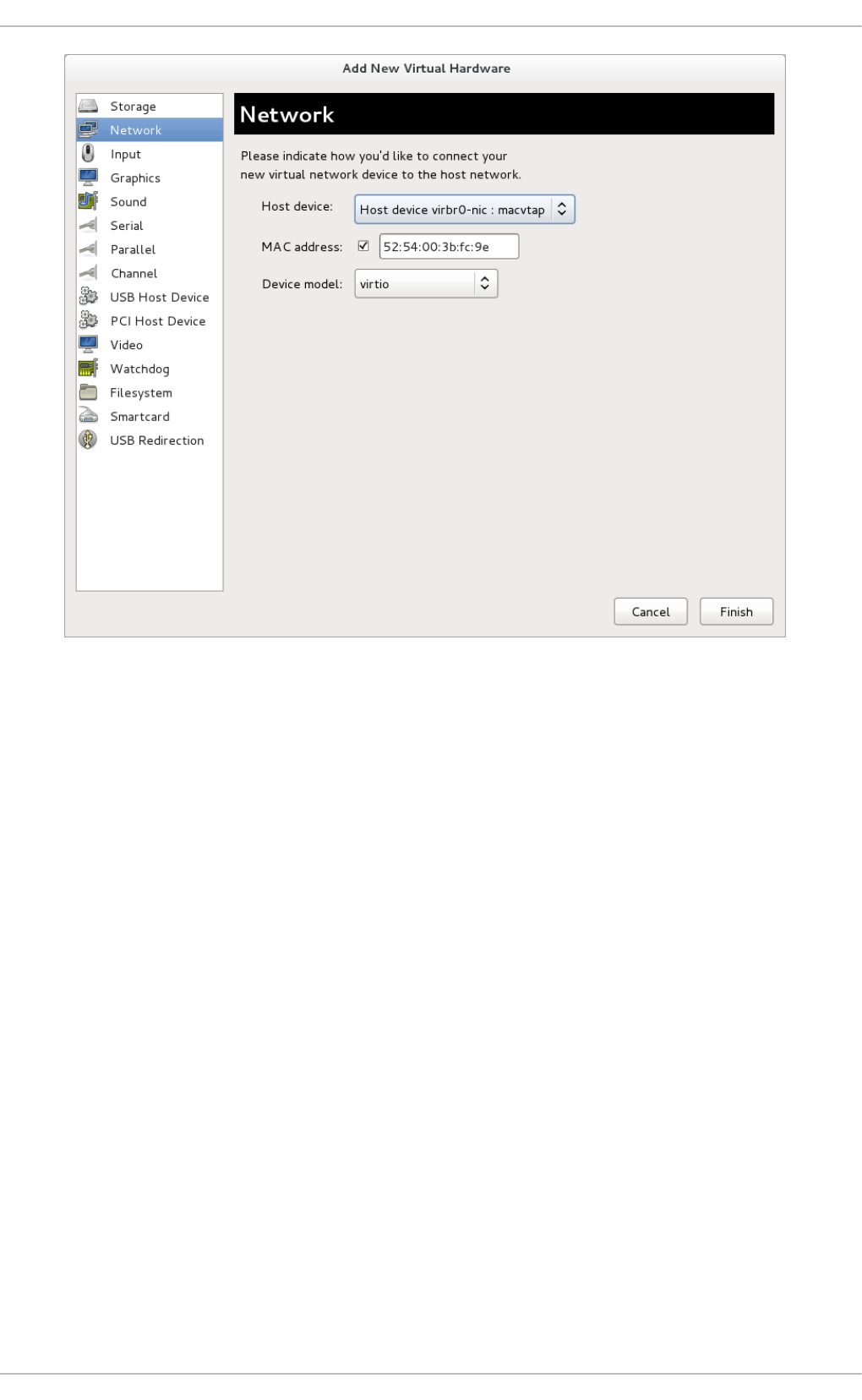

- 20.12. Directly attaching to physical interface

- 20.13. Dynamically changing a host physical machine or a network bridge that is attached to a virtual NIC

- 20.14. Applying network filtering

- 20.14.1. Introduction

- 20.14.2. Filtering chains

- 20.14.3. Filtering chain priorities

- 20.14.4. Usage of variables in filters

- 20.14.5. Automatic IP address detection and DHCP snooping

- 20.14.6. Reserved Variables

- 20.14.7. Element and attribute overview

- 20.14.8. References to other filters

- 20.14.9. Filter rules

- 20.14.10. Supported protocols

- 20.14.10.1. MAC (Ethernet)

- 20.14.10.2. VLAN (802.1Q)

- 20.14.10.3. STP (Spanning Tree Protocol)

- 20.14.10.4. ARP/RARP

- 20.14.10.5. IPv4

- 20.14.10.6. IPv6

- 20.14.10.7. TCP/UDP/SCTP

- 20.14.10.8. ICMP

- 20.14.10.9. IGMP, ESP, AH, UDPLITE, 'ALL'

- 20.14.10.10. TCP/UDP/SCTP over IPV6

- 20.14.10.11. ICMPv6

- 20.14.10.12. IGMP, ESP, AH, UDPLITE, 'ALL' over IPv6

- 20.14.11. Advanced Filter Configuration Topics

- 20.14.12. Limitations

- 20.15. Creating Tunnels

- 20.16. Setting vLAN tags

- 20.17. Applying QoS to your virtual network

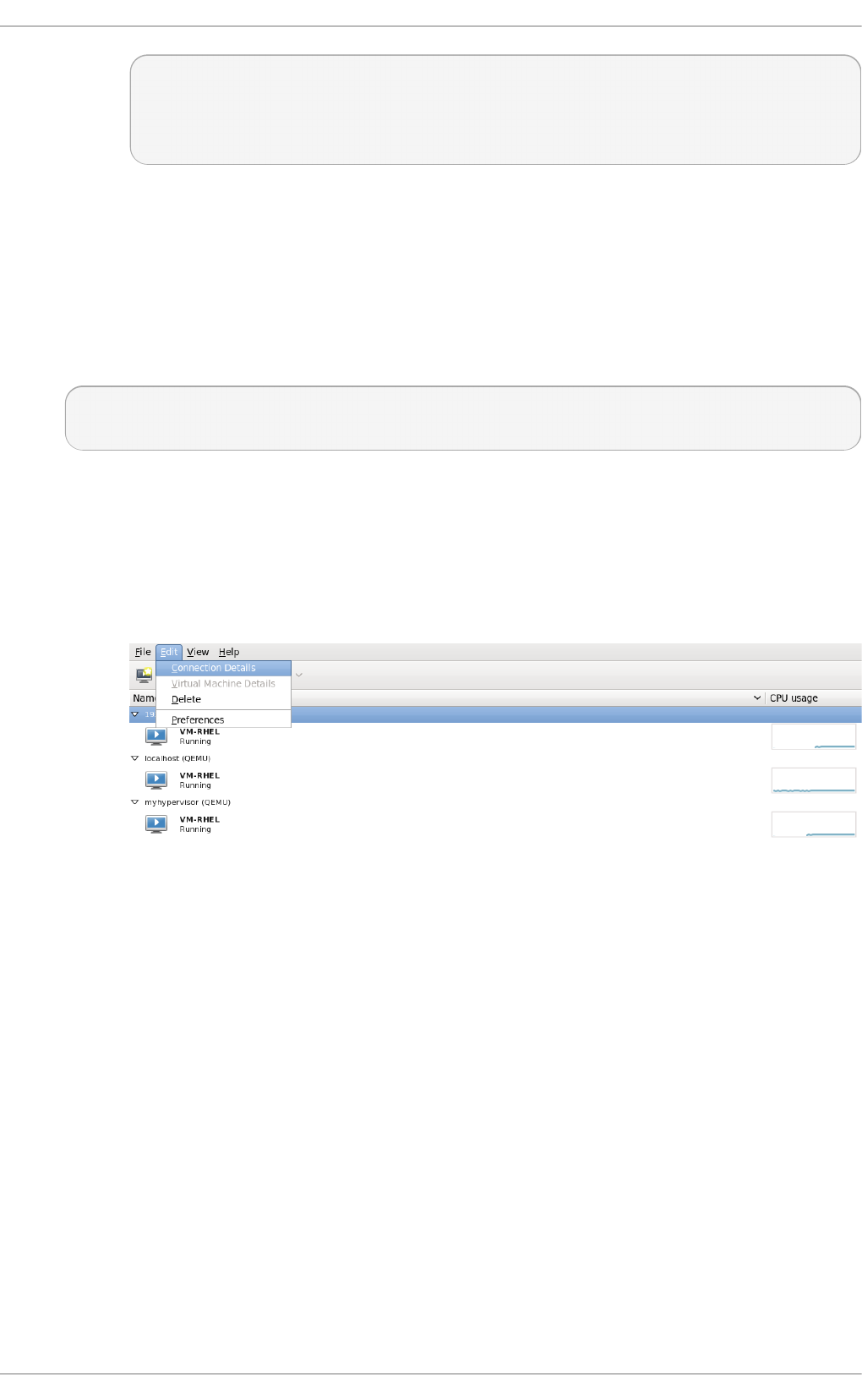

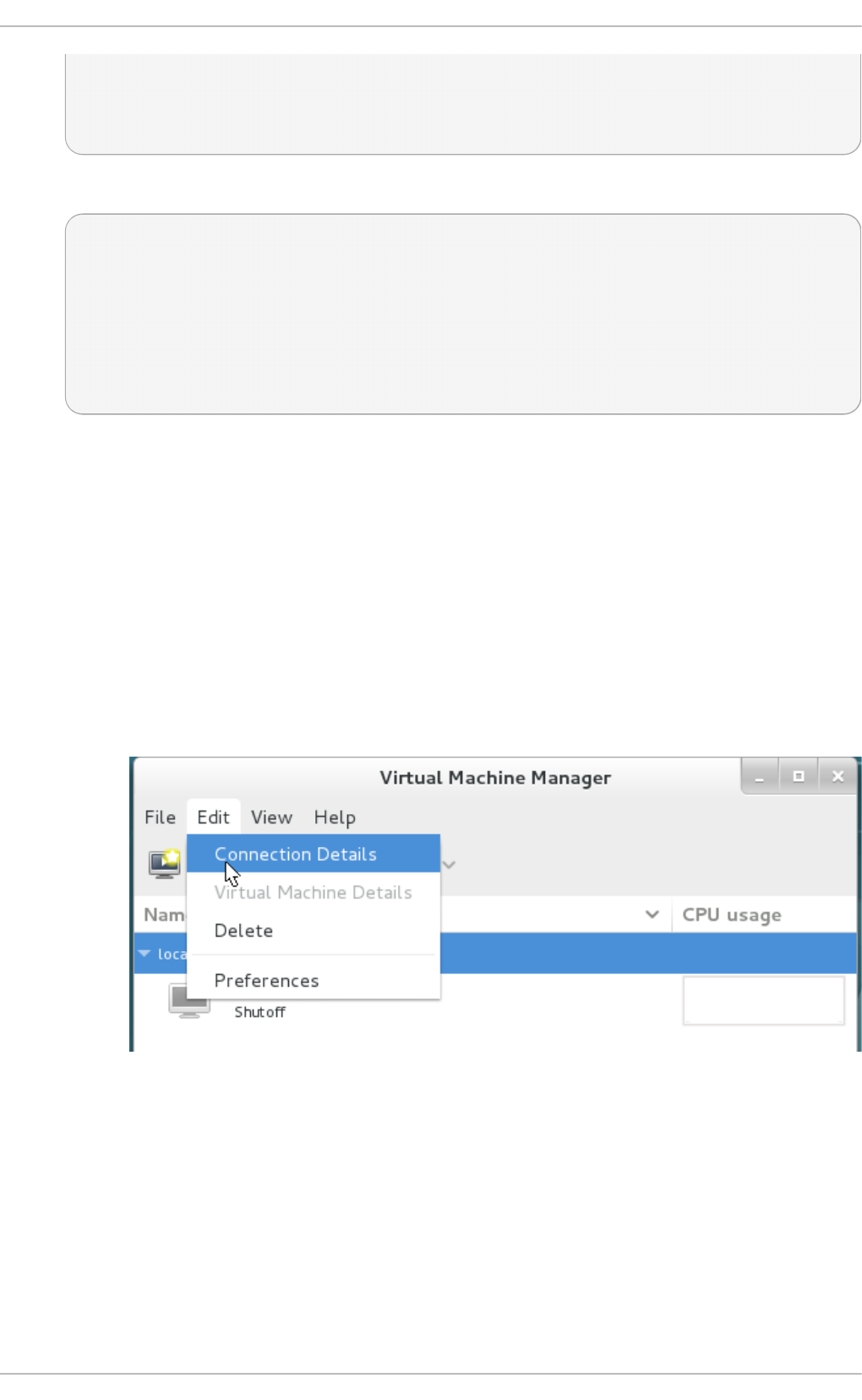

- Chapter 21. Remote management of guests

- Chapter 22. Managing guests with the Virtual Machine Manager (virt-manager)

- 22.1. Starting virt-manager

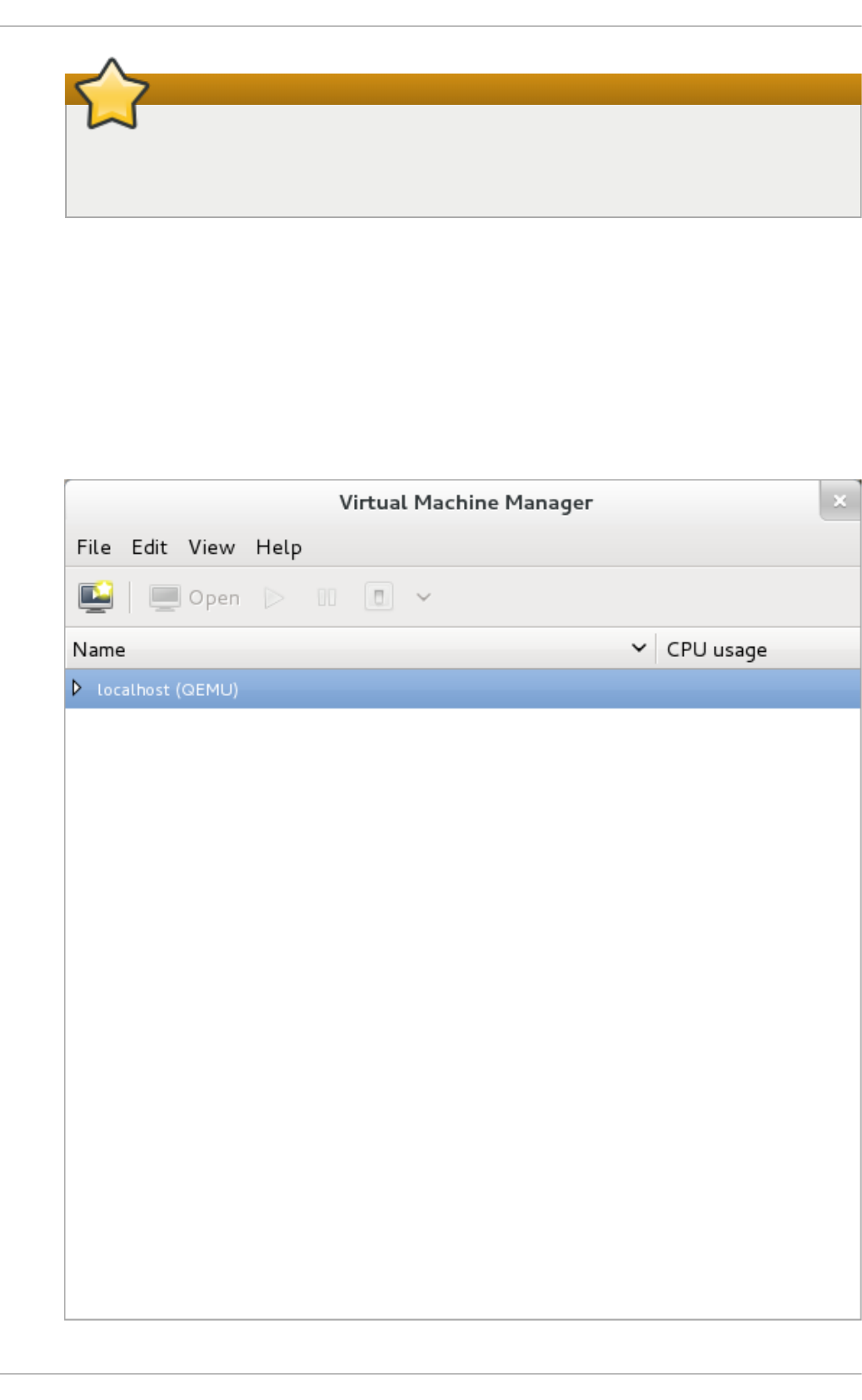

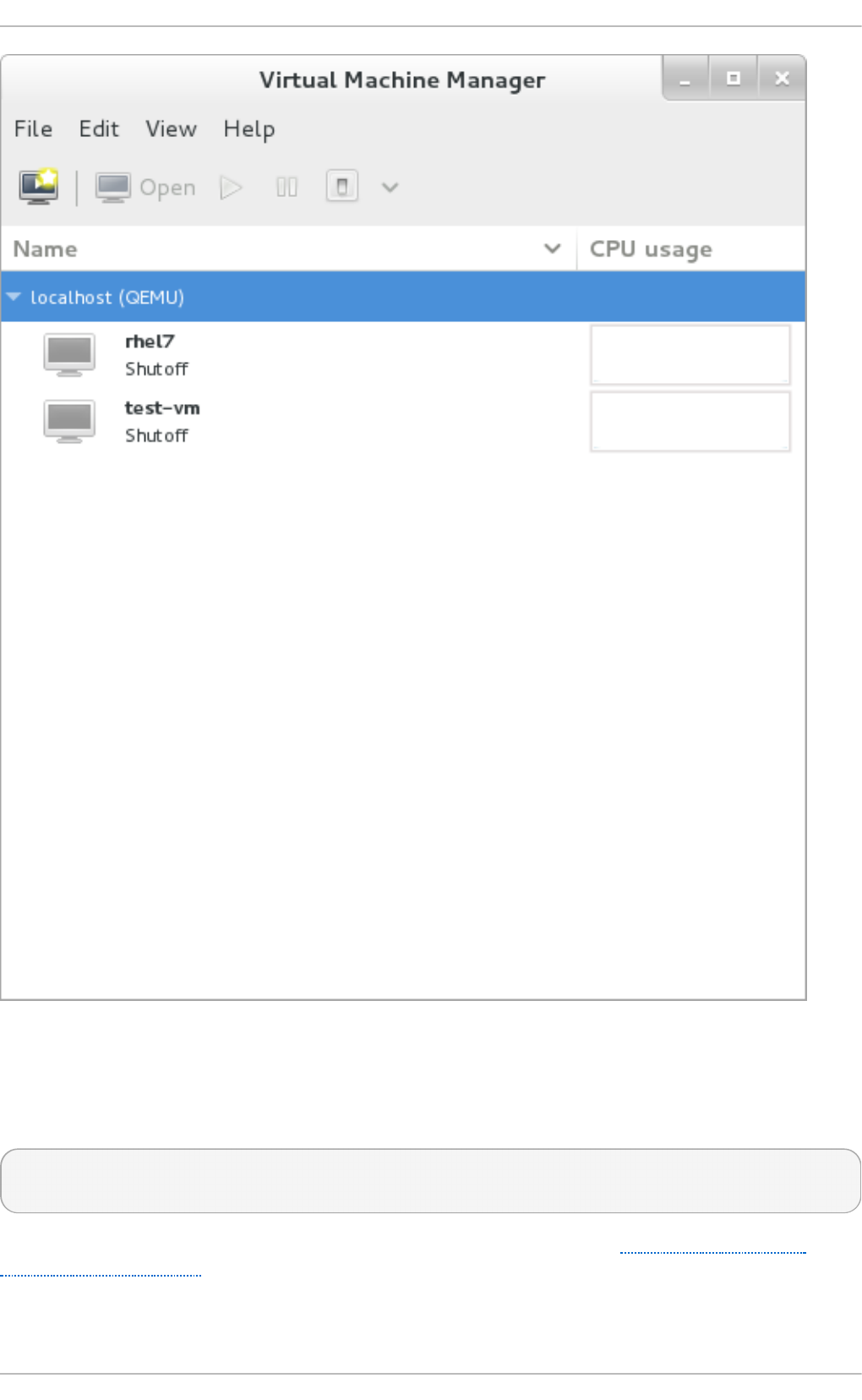

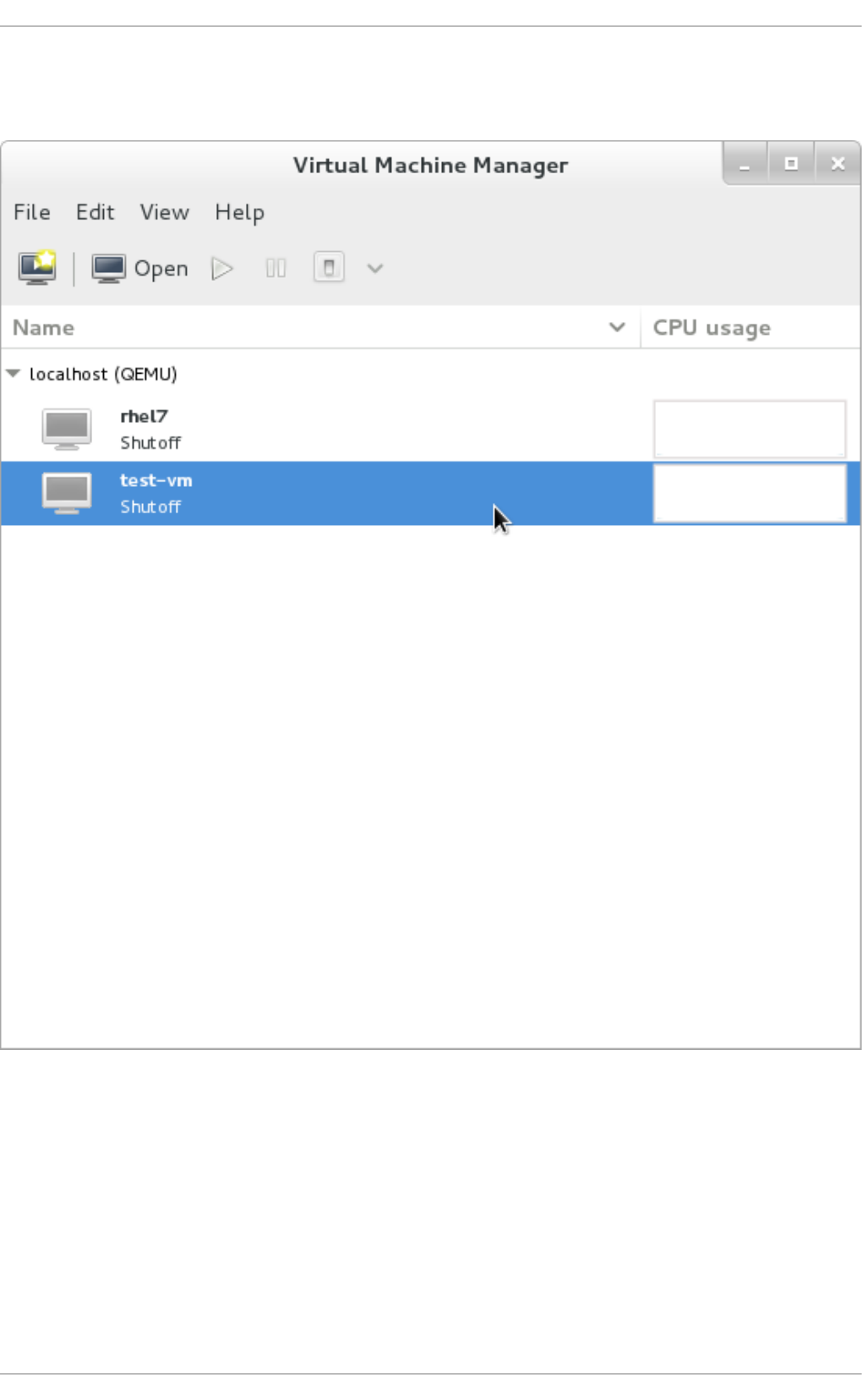

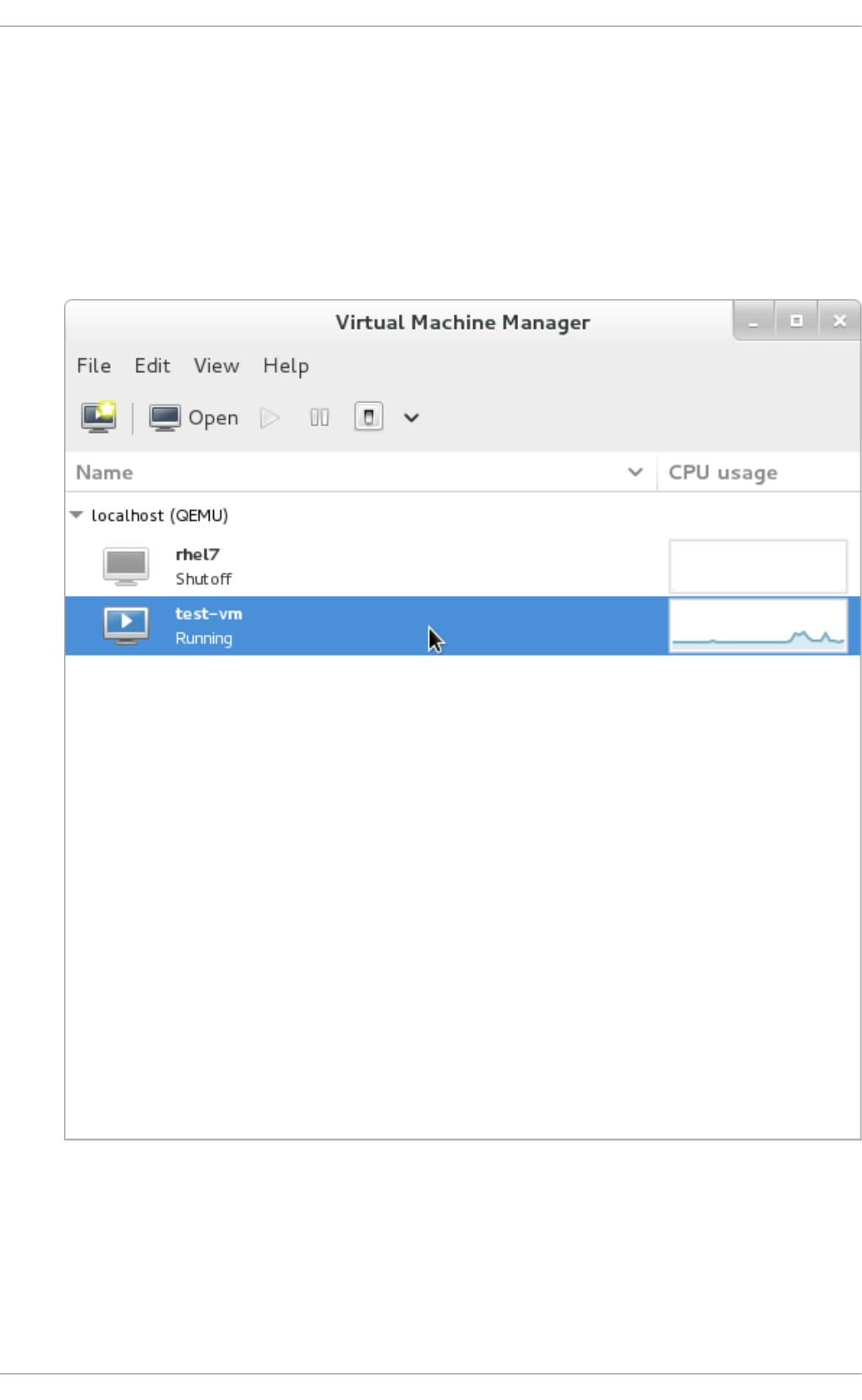

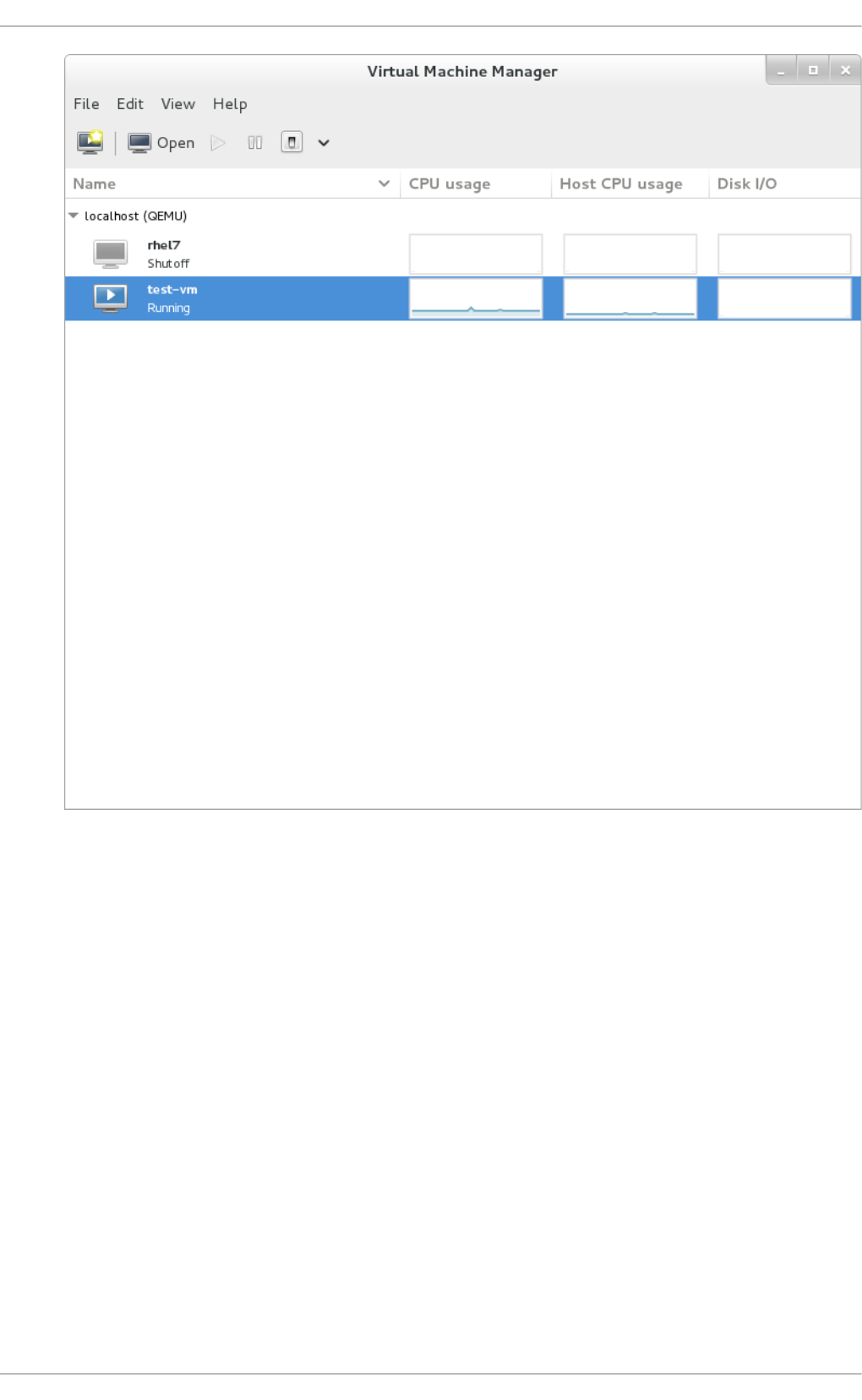

- 22.2. The Virtual Machine Manager main window

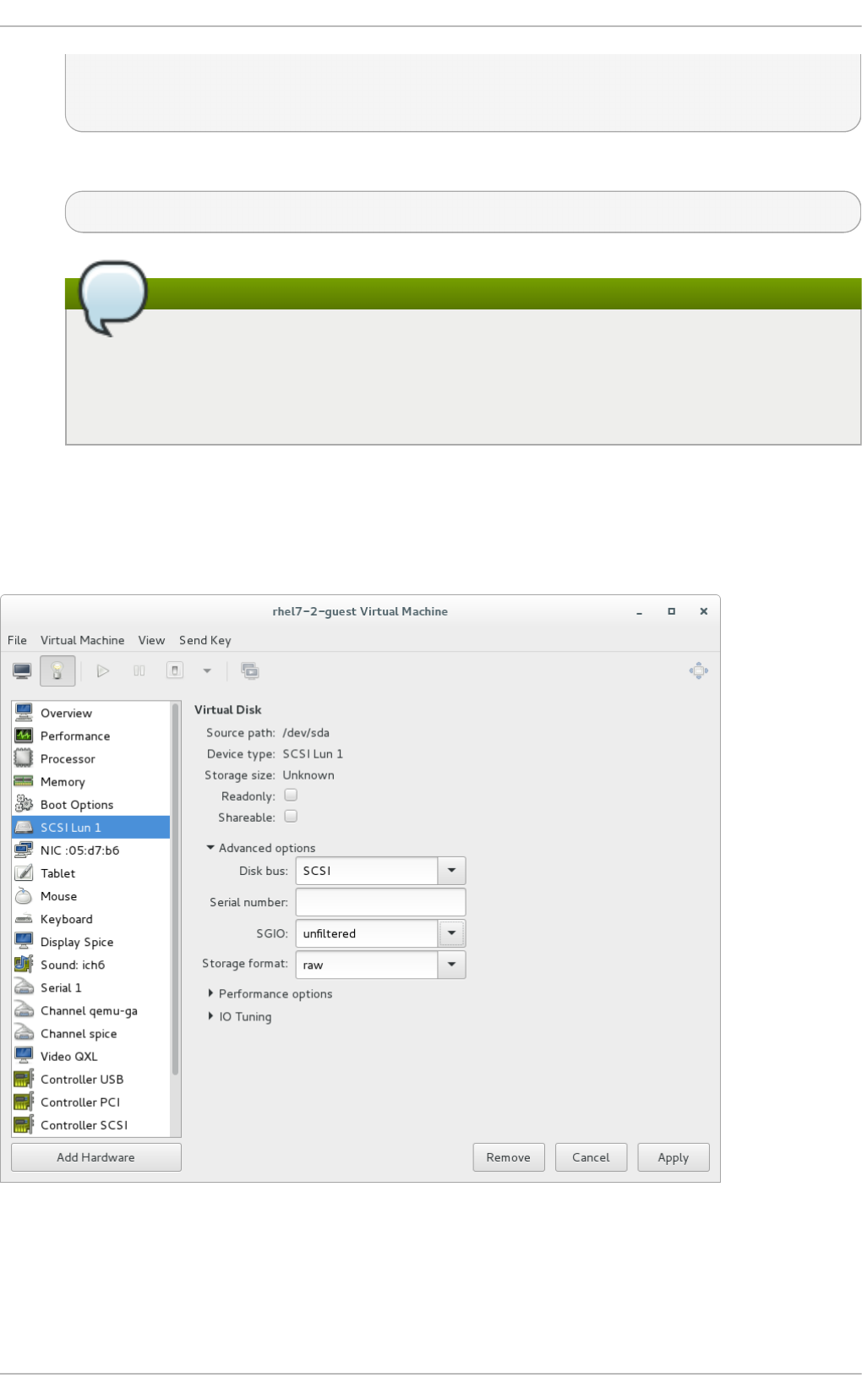

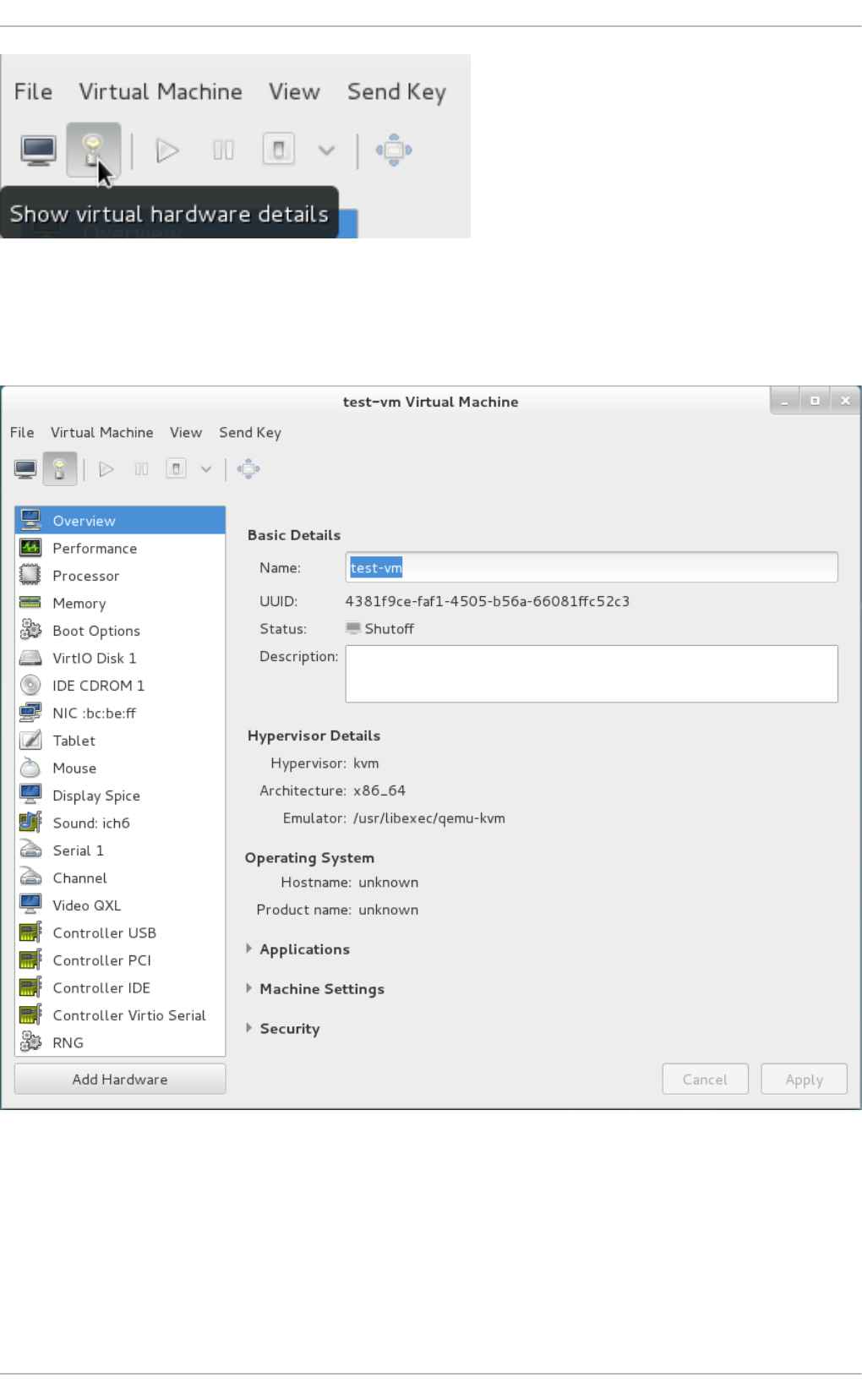

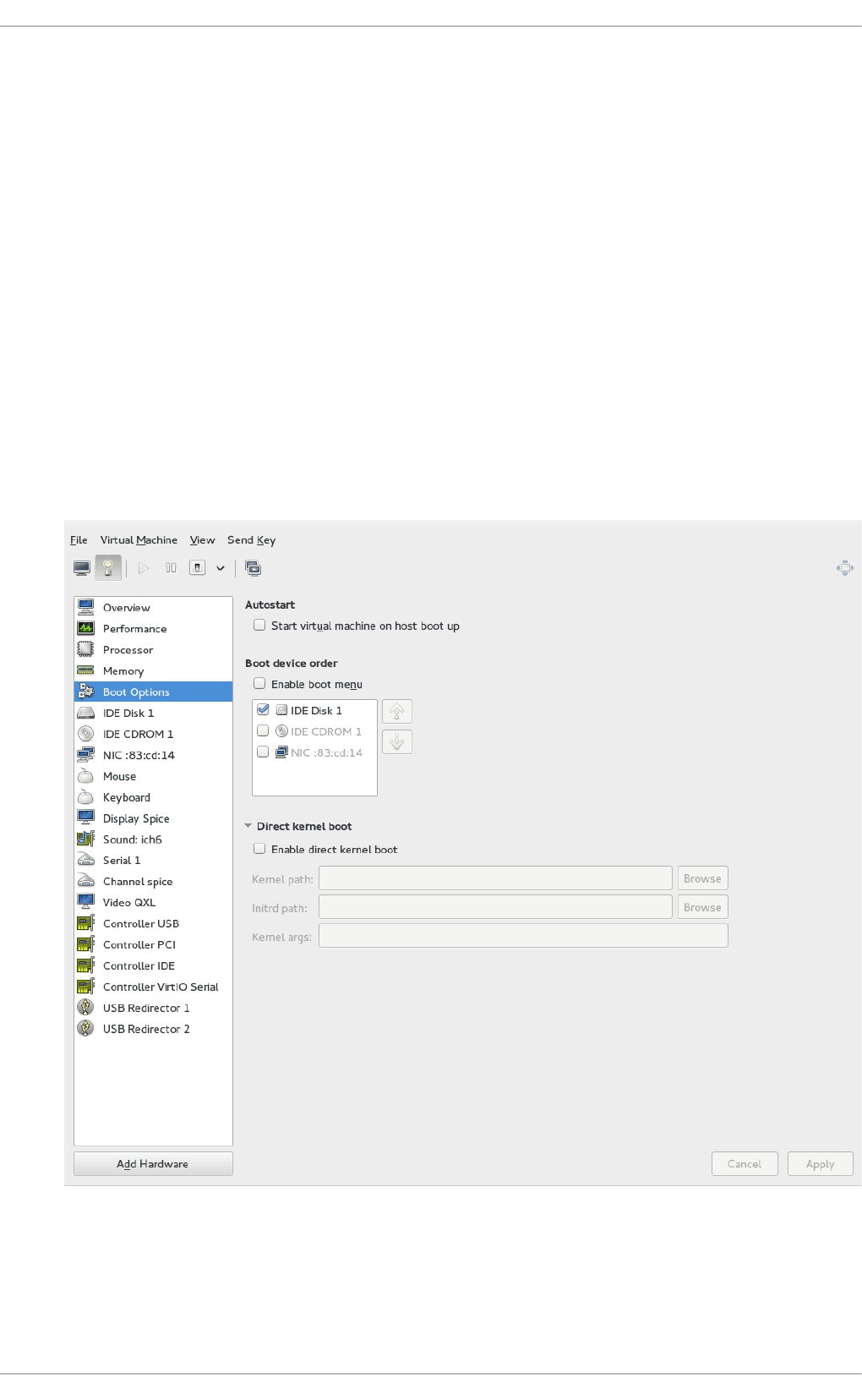

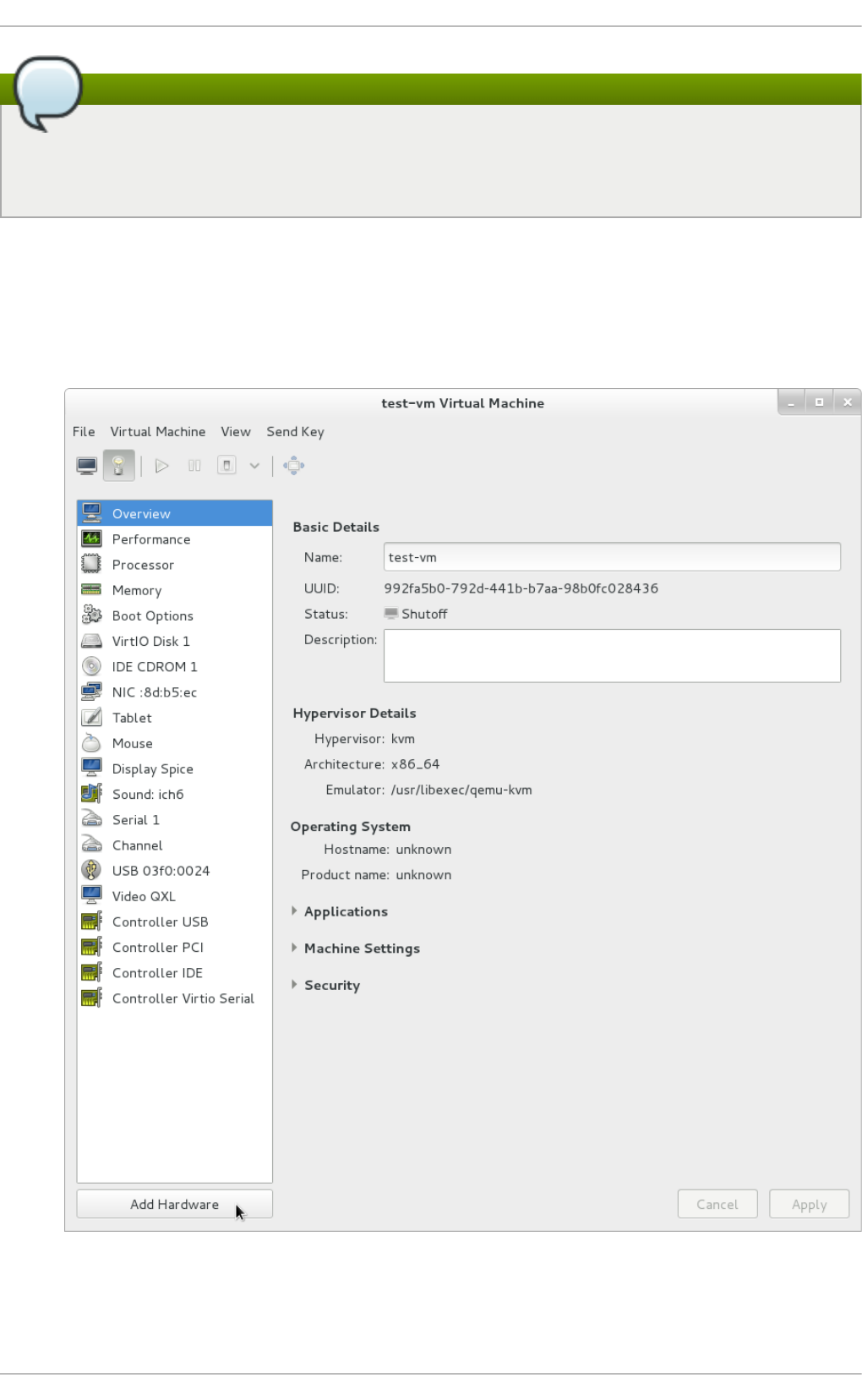

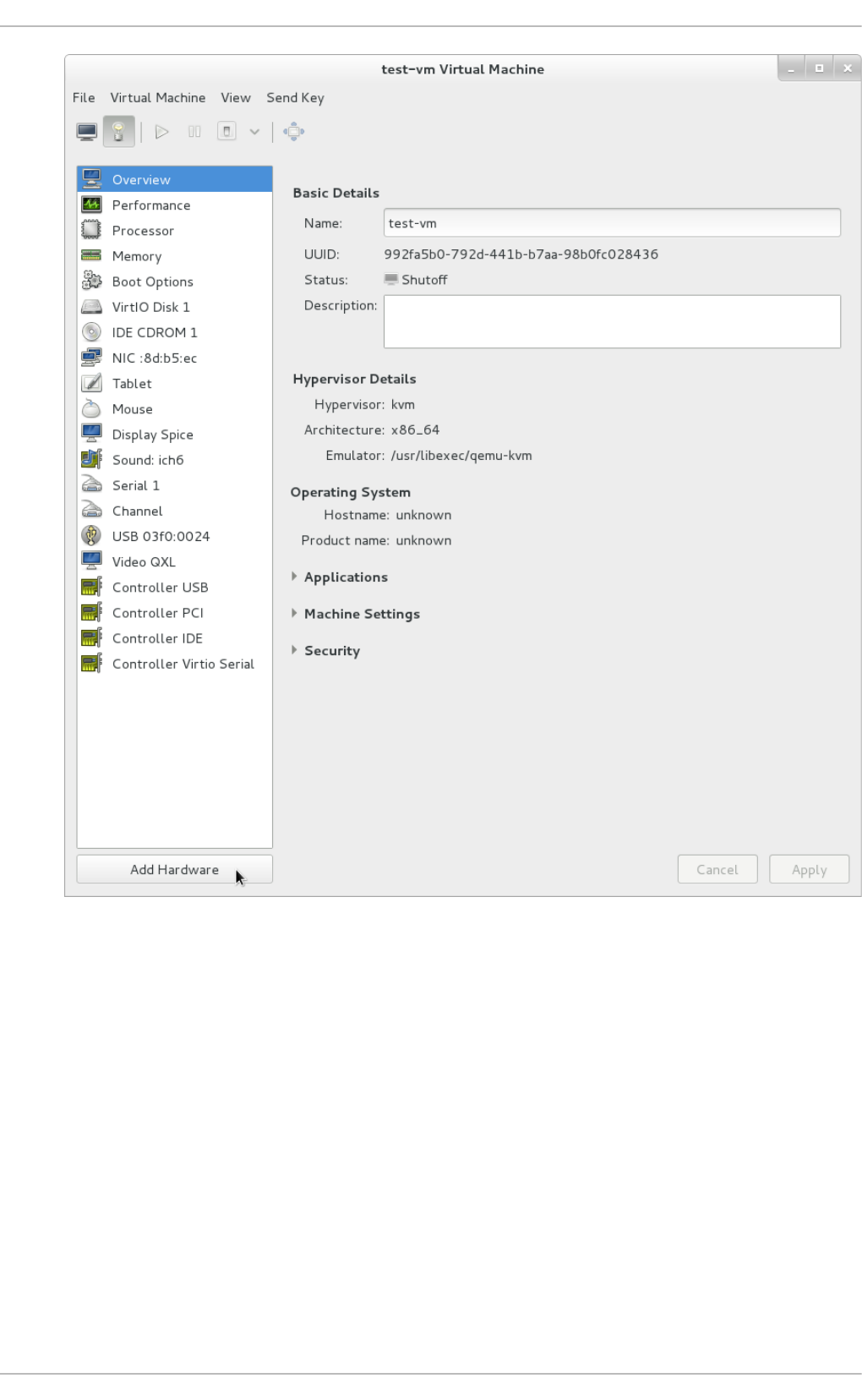

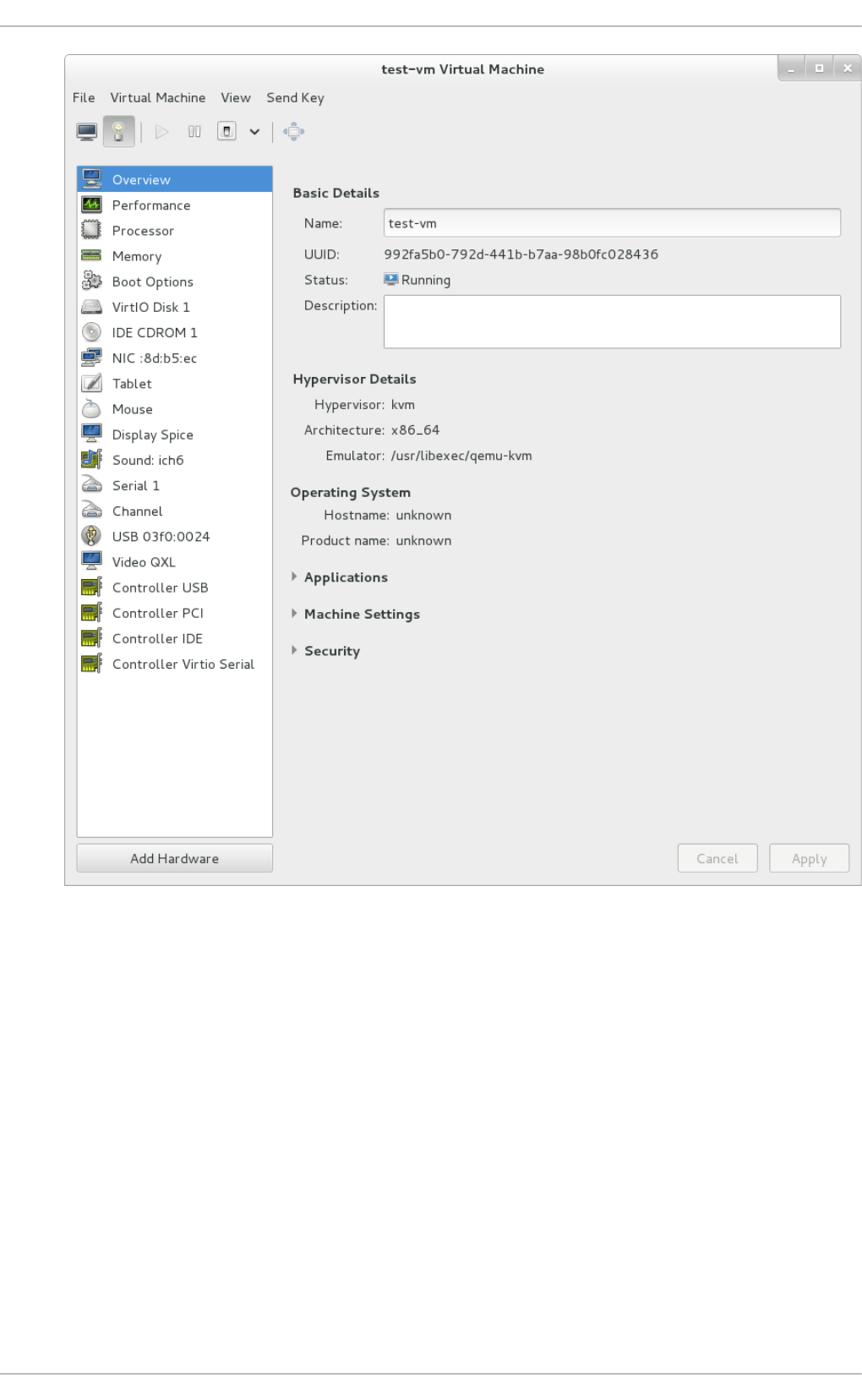

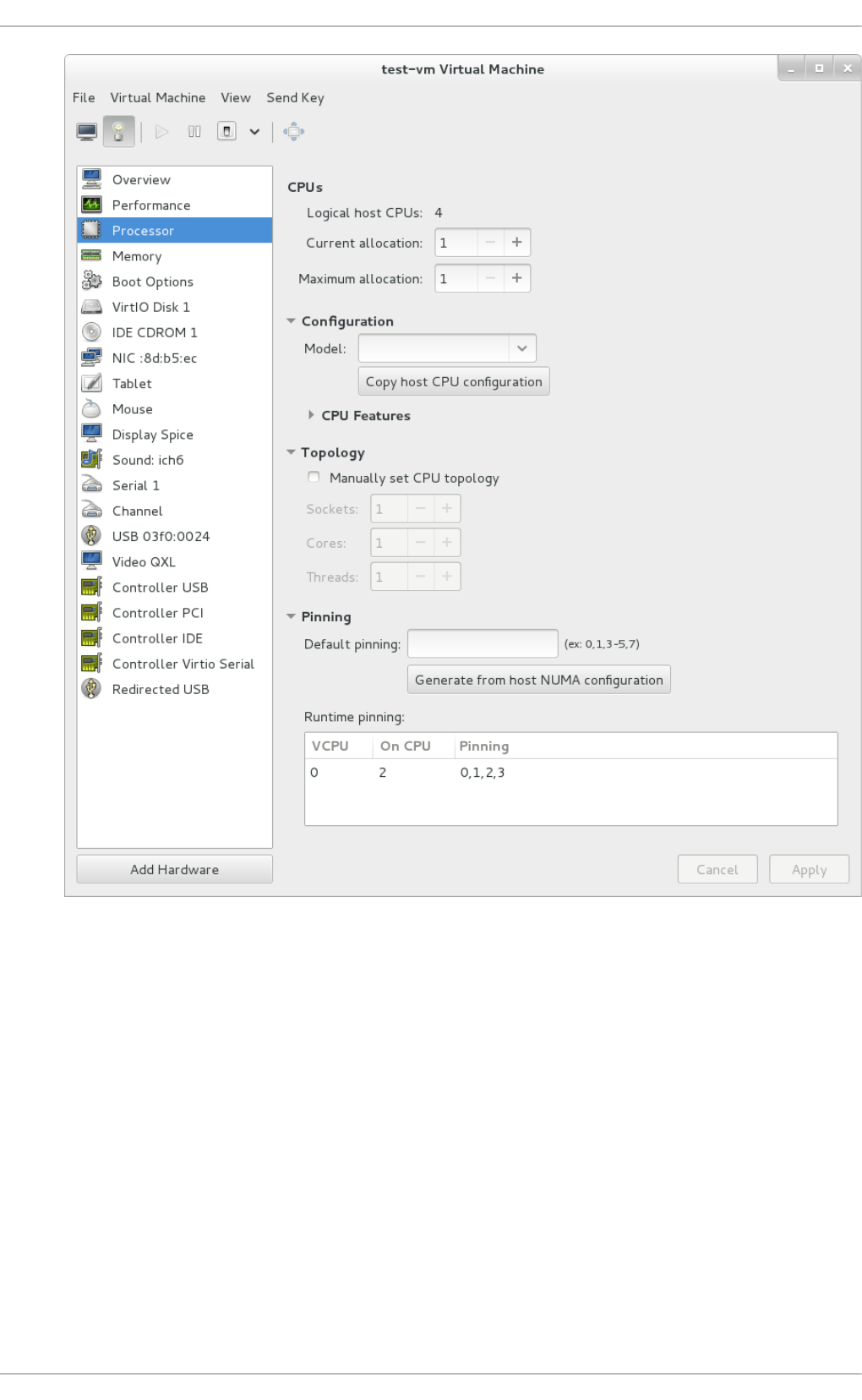

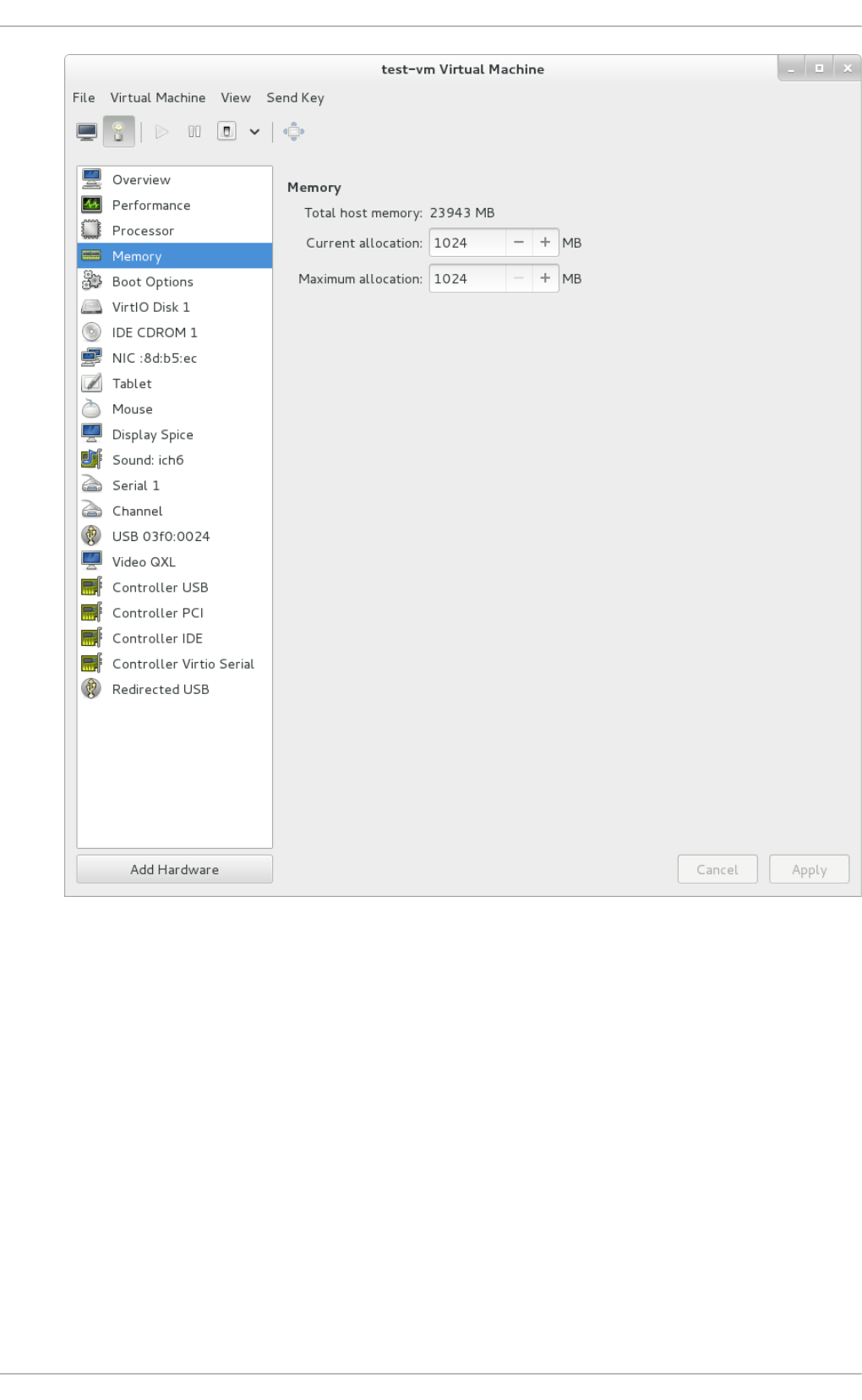

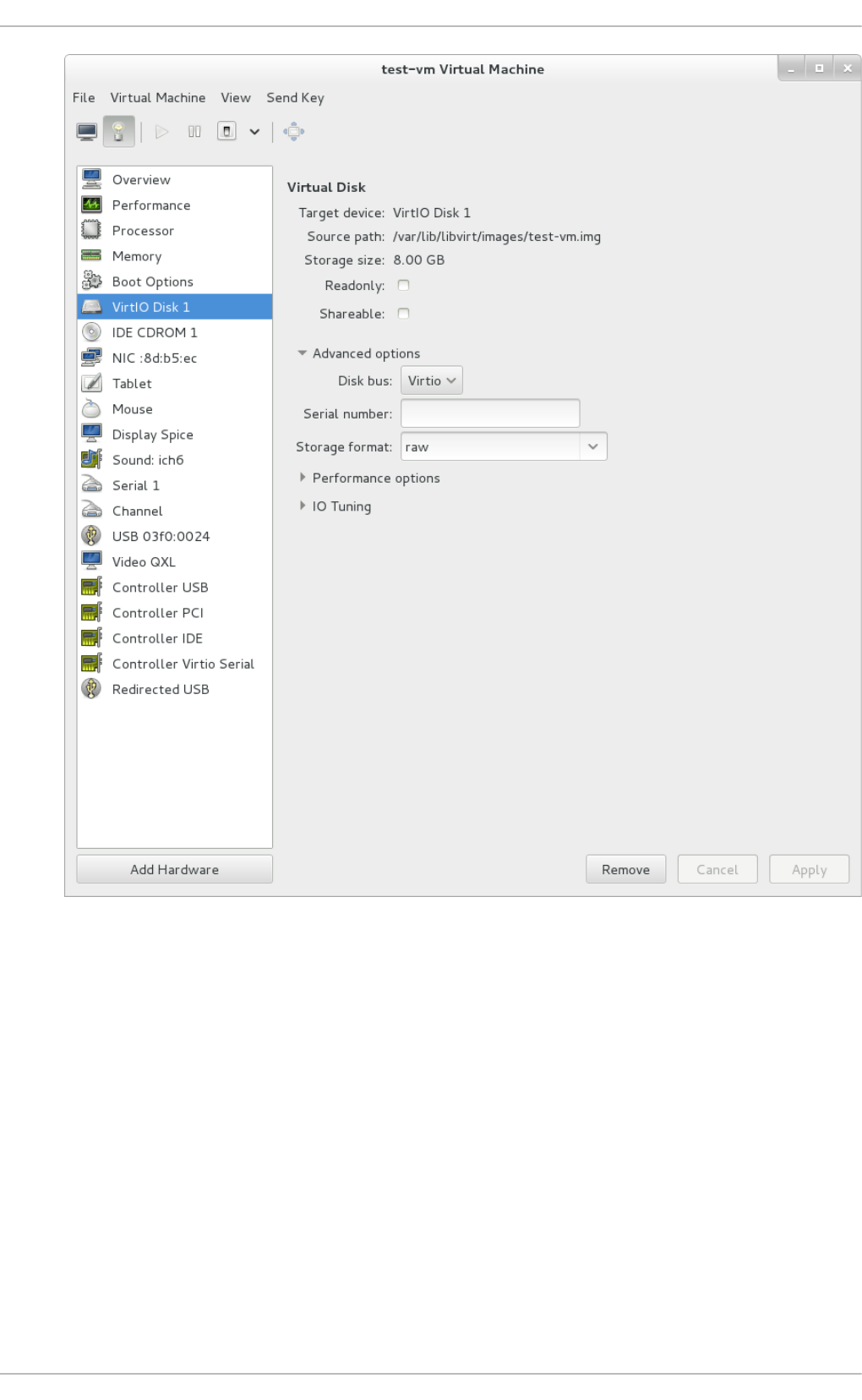

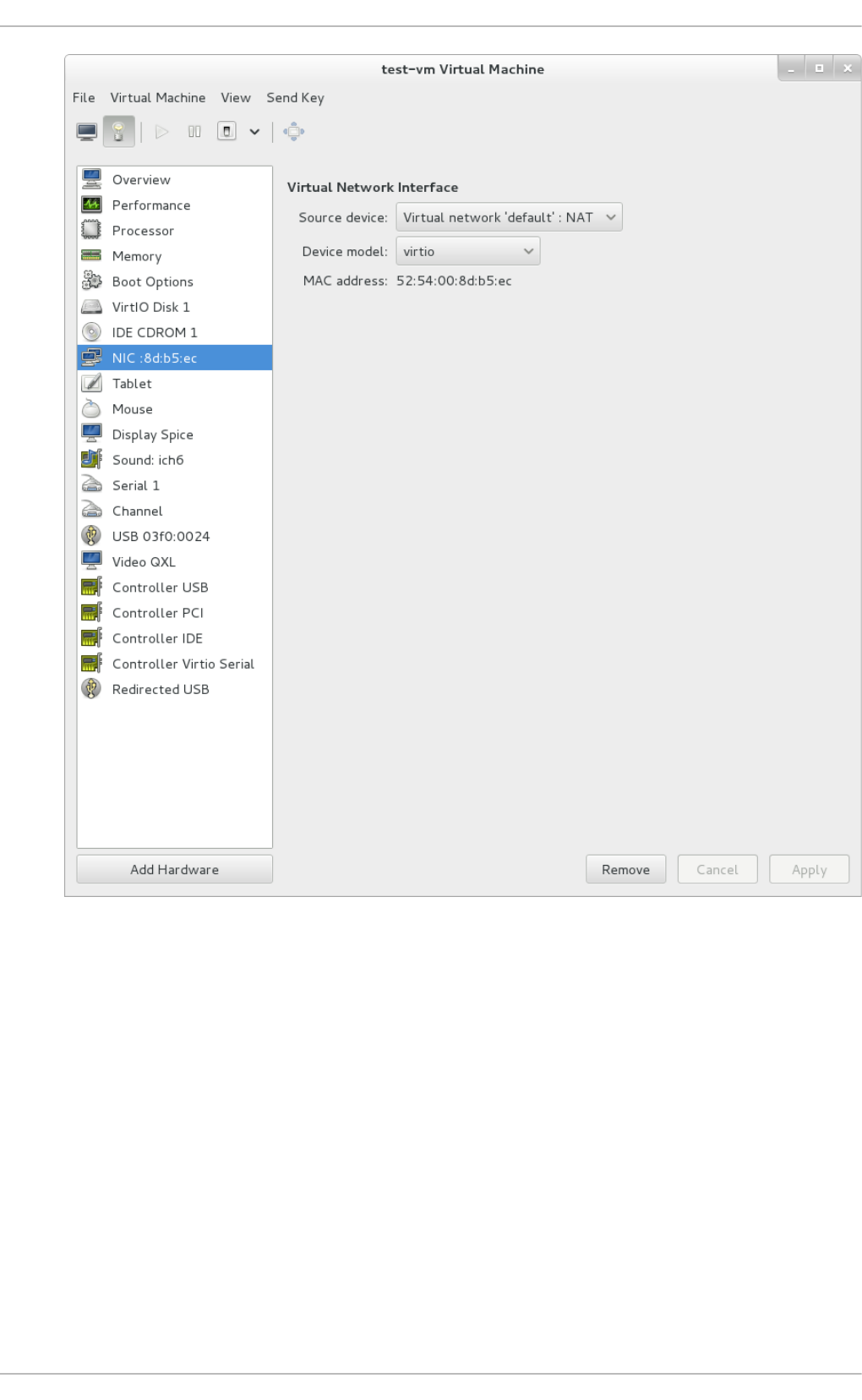

- 22.3. The virtual hardware details window

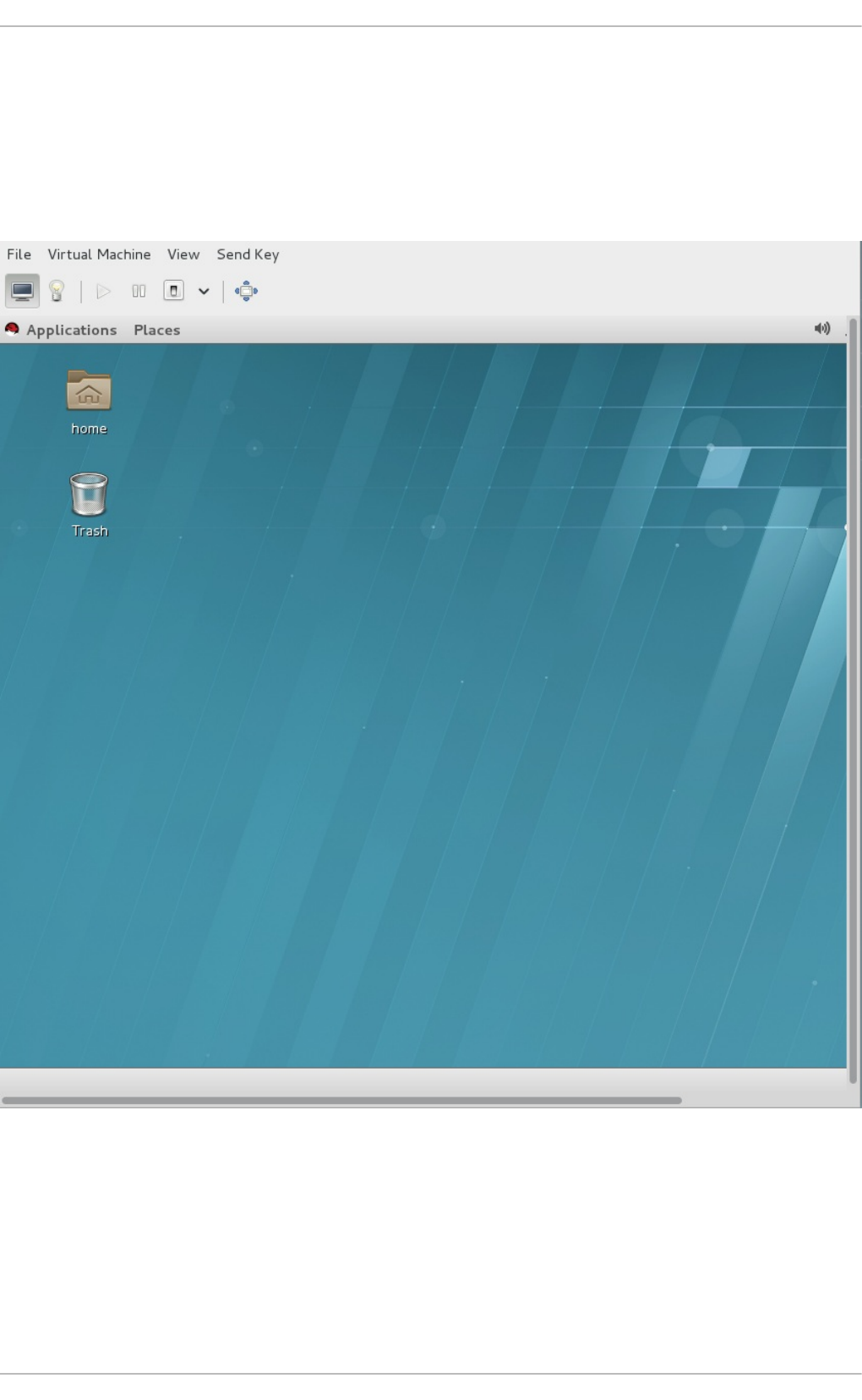

- 22.4. Virtual Machine graphical console

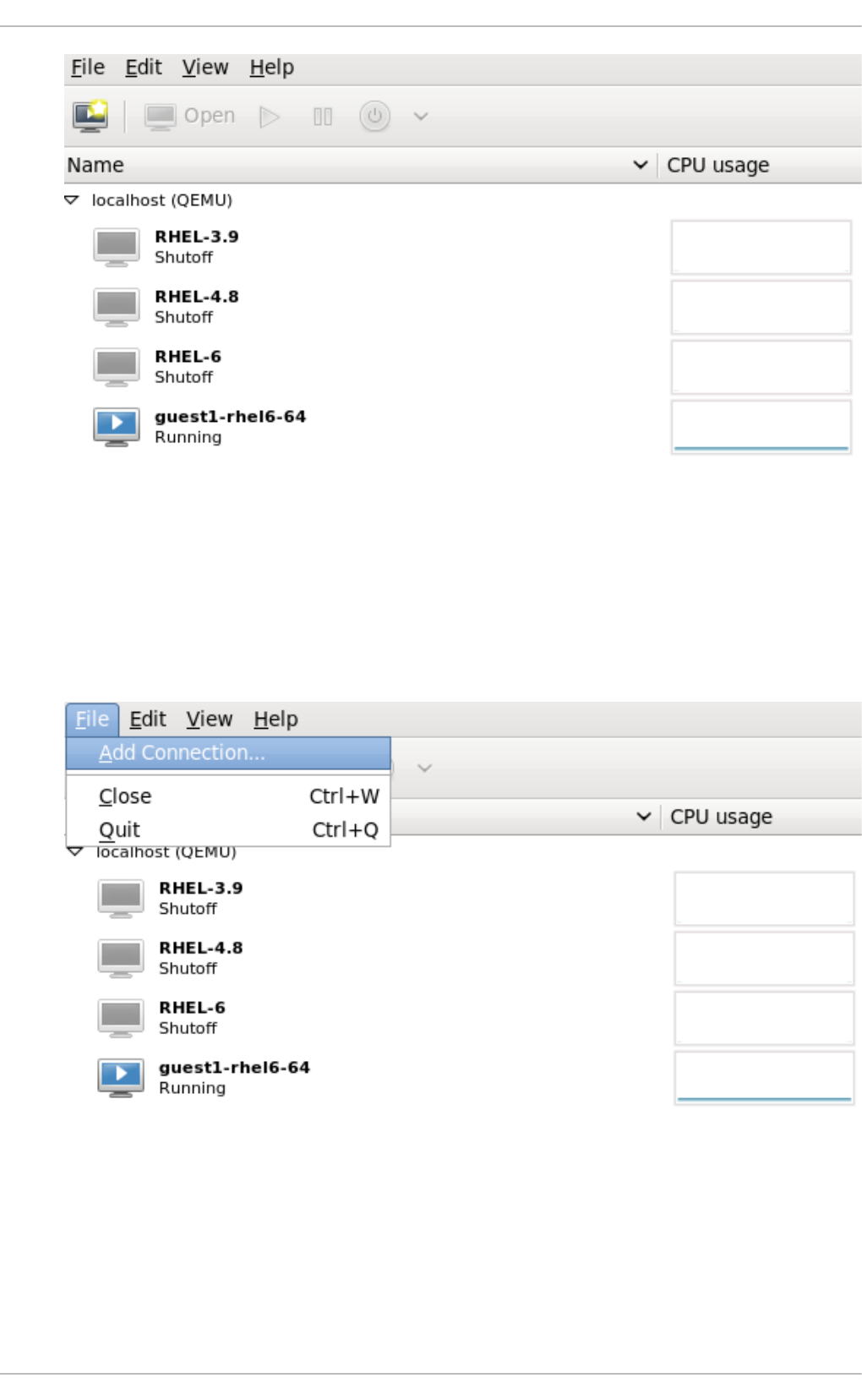

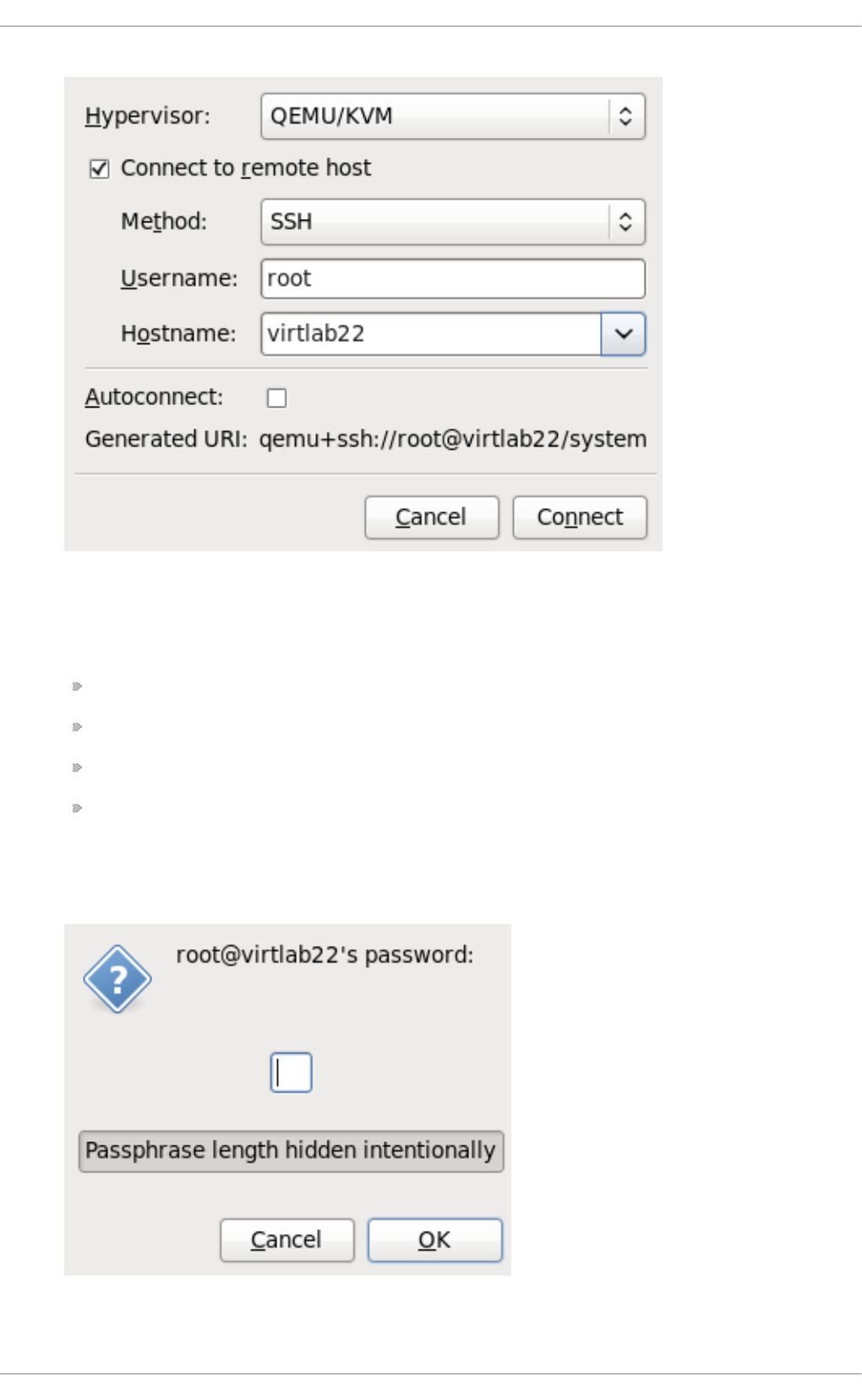

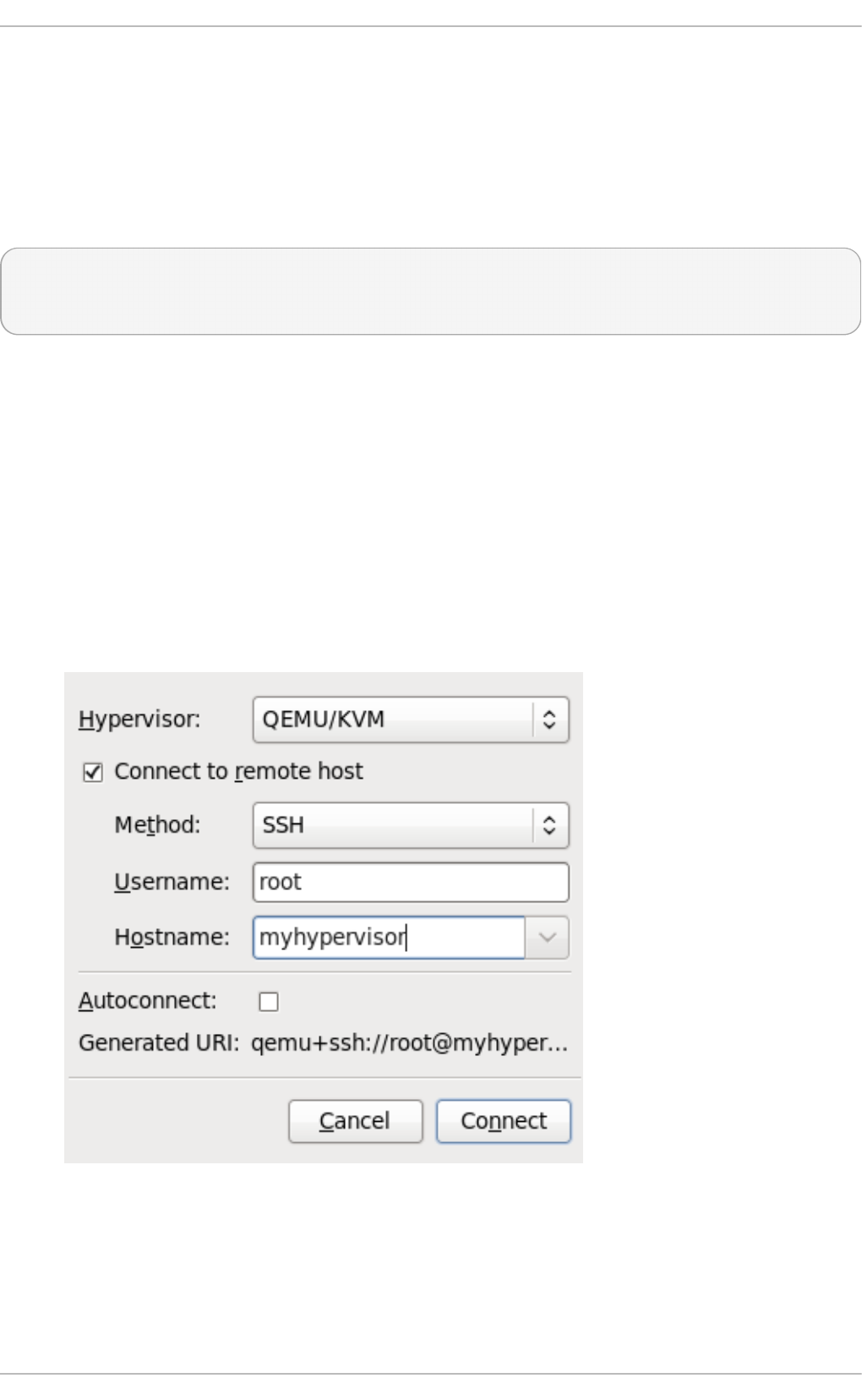

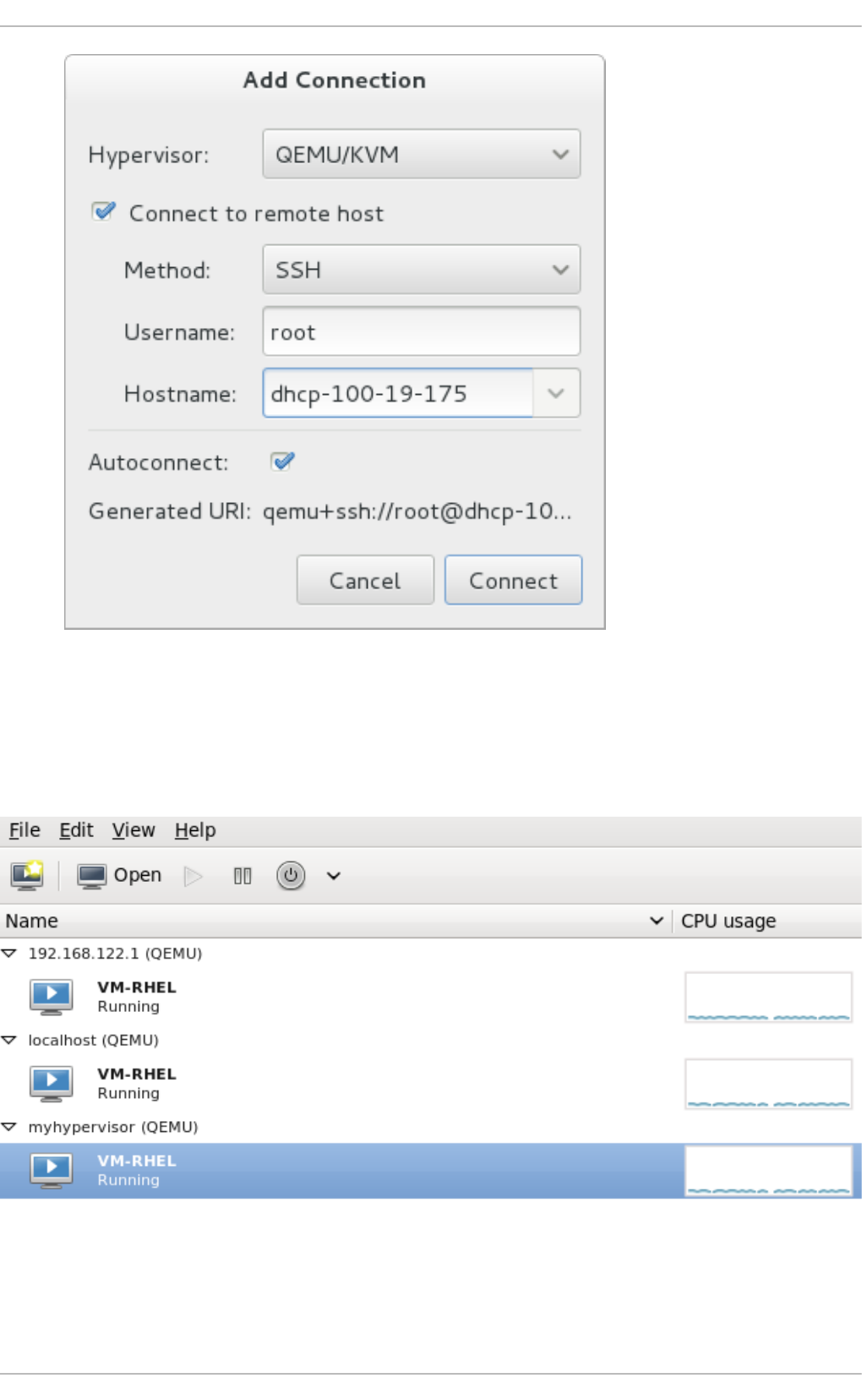

- 22.5. Adding a remote connection

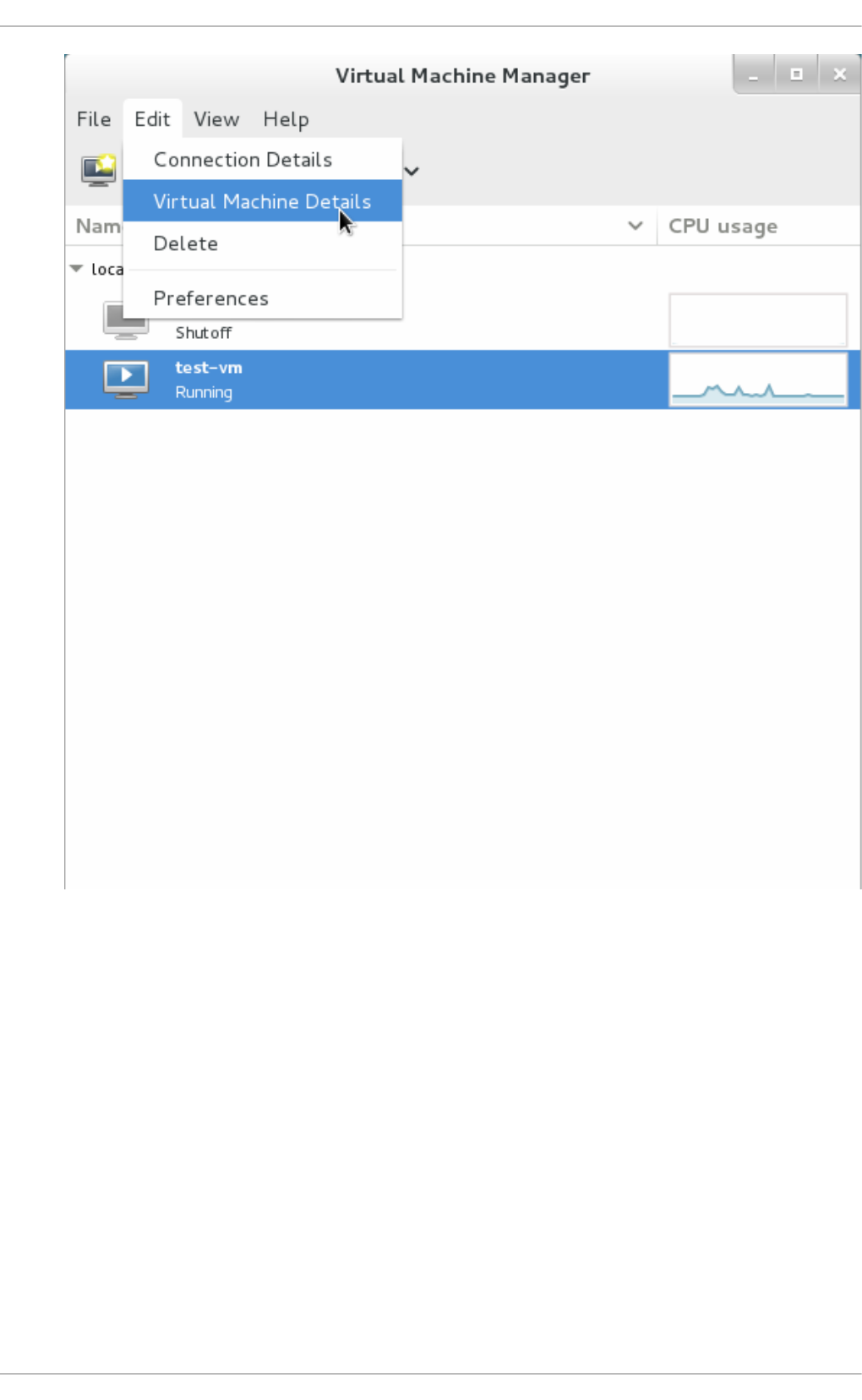

- 22.6. Displaying guest details

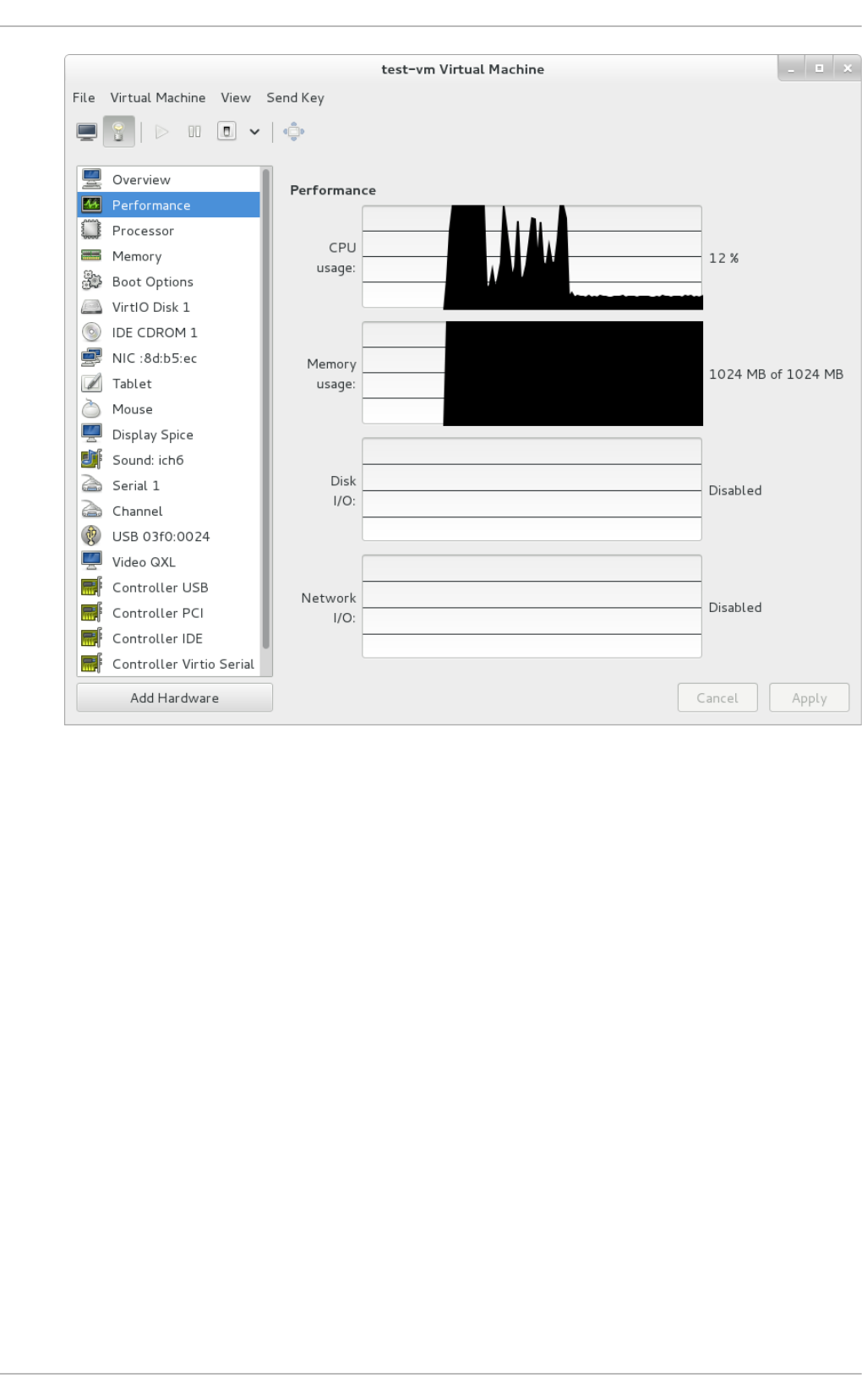

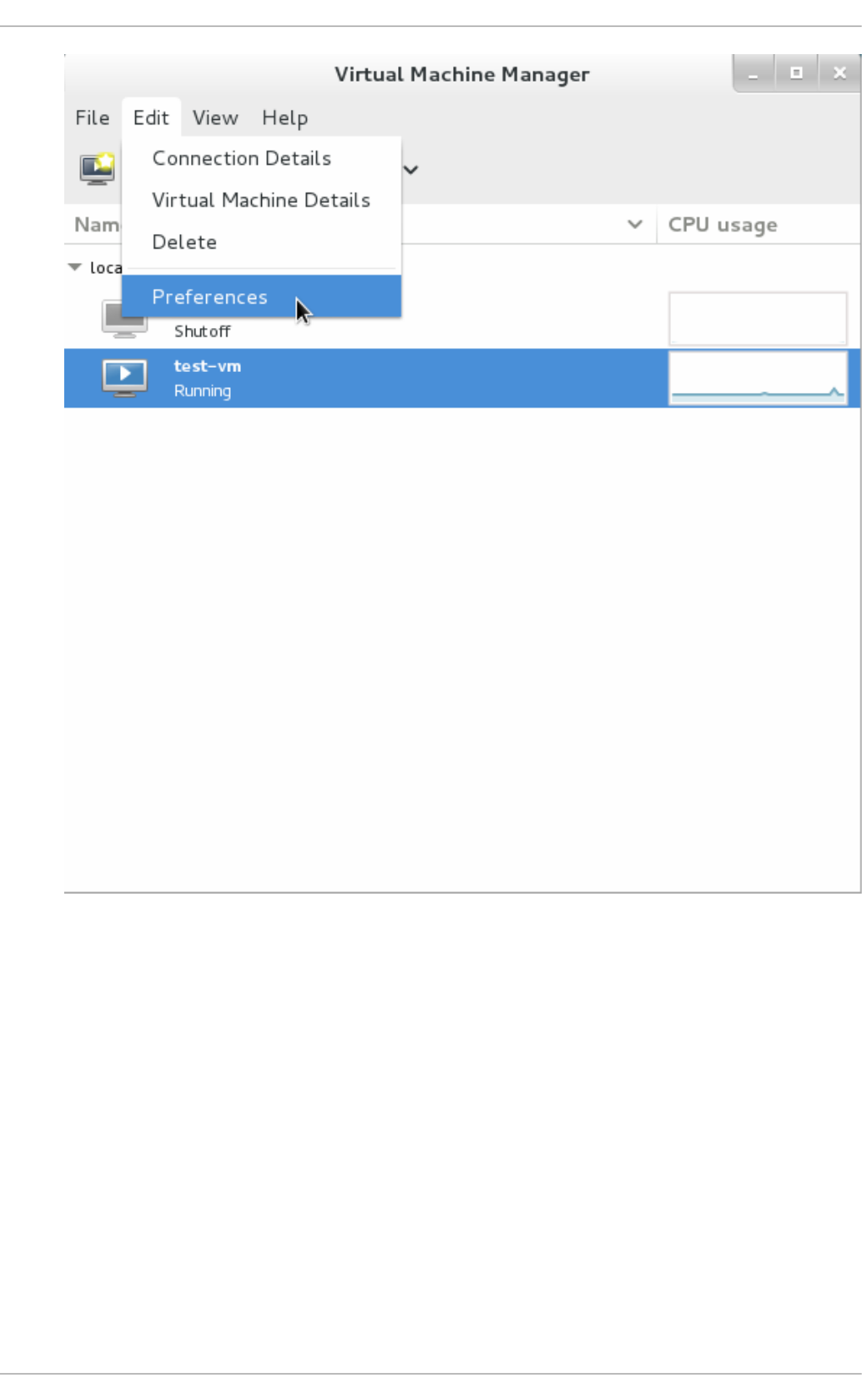

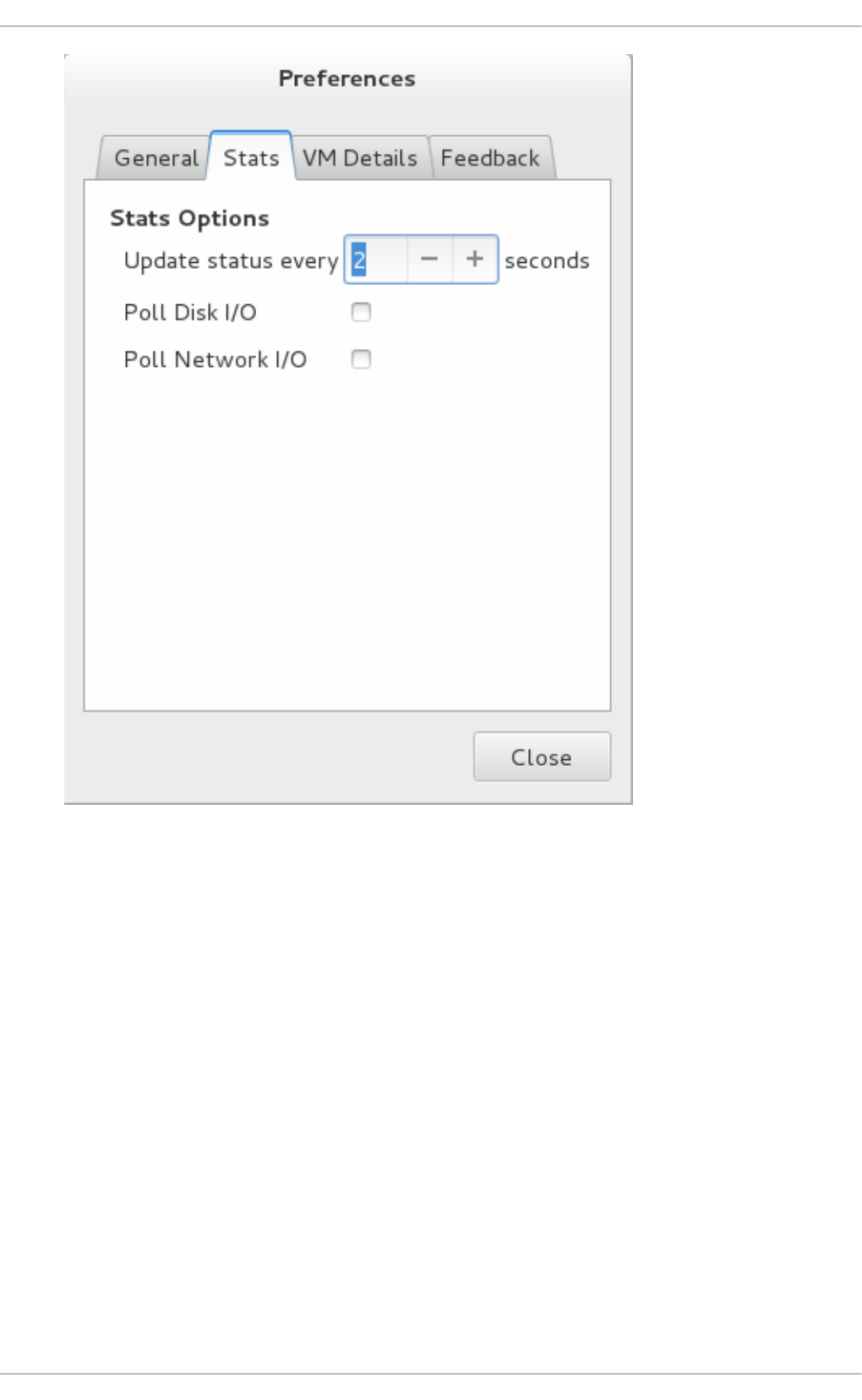

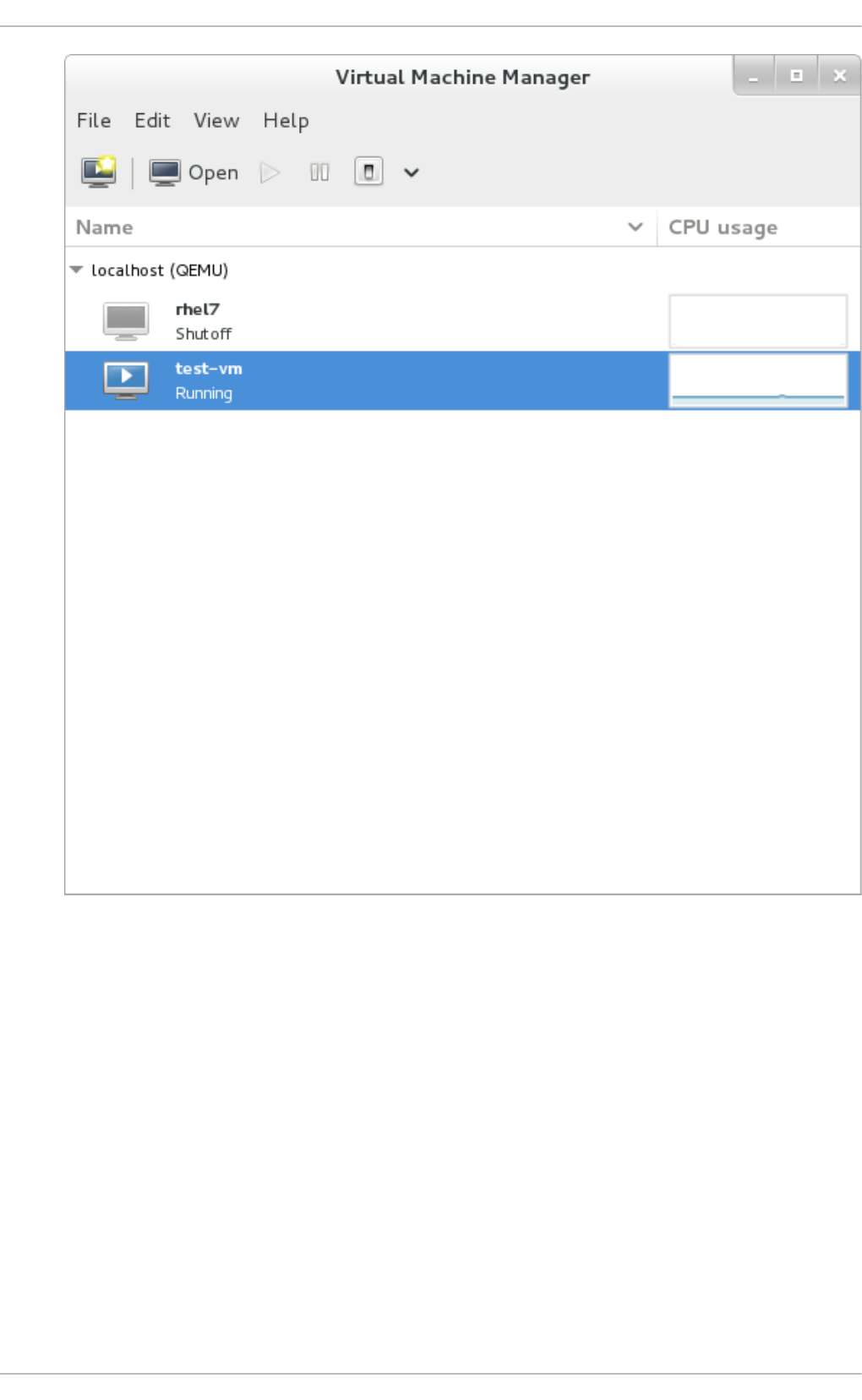

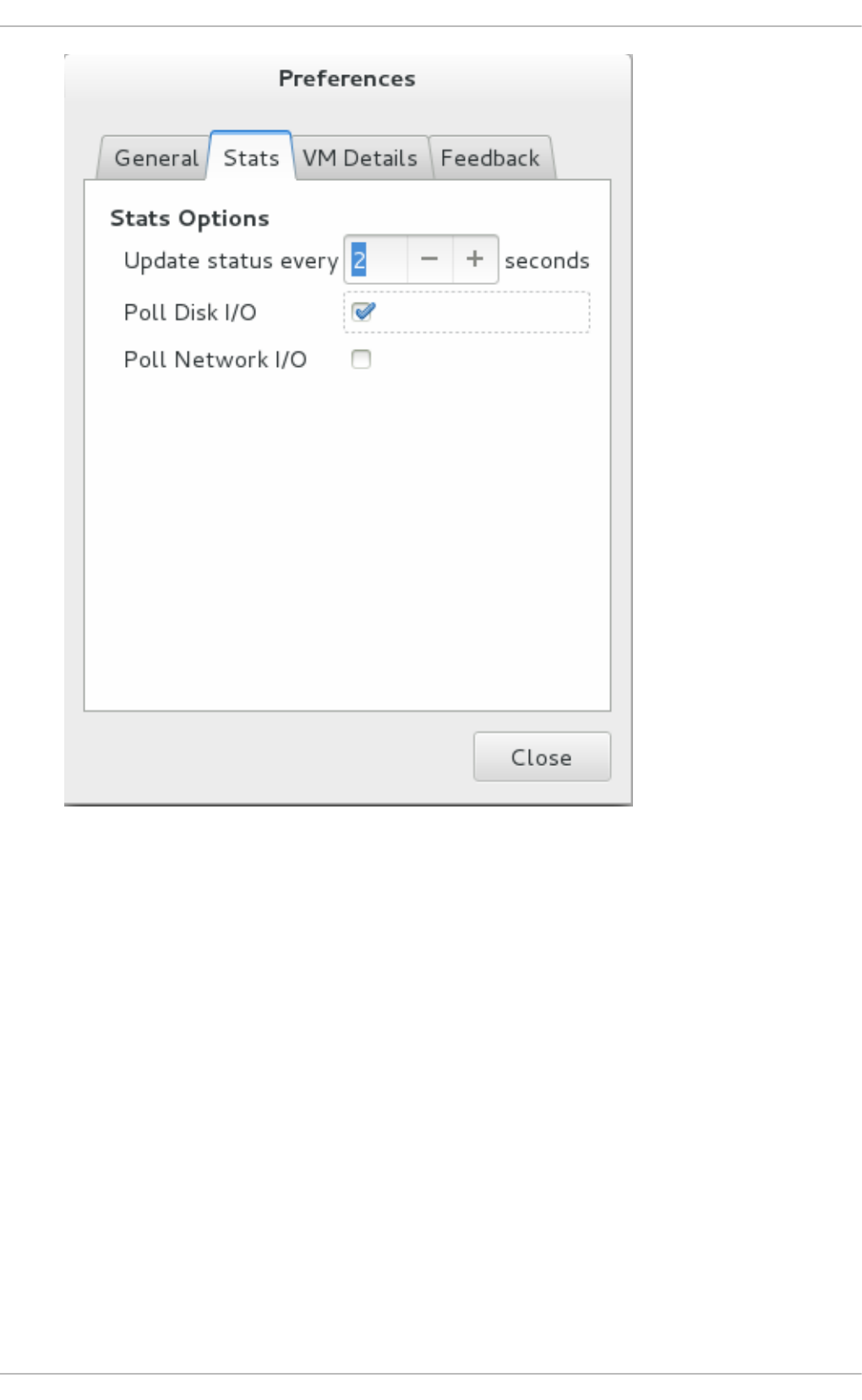

- 22.7. Performance monitoring

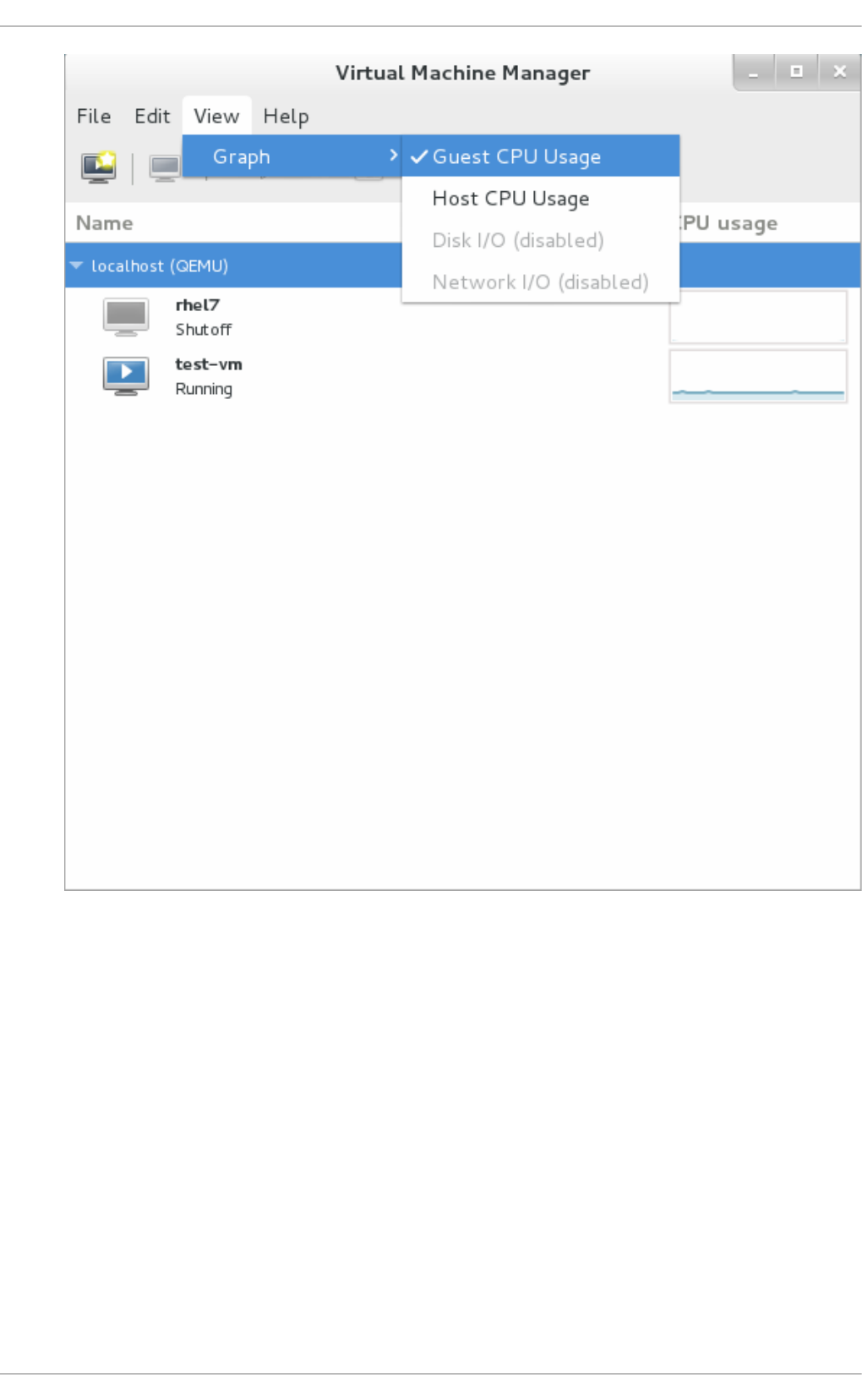

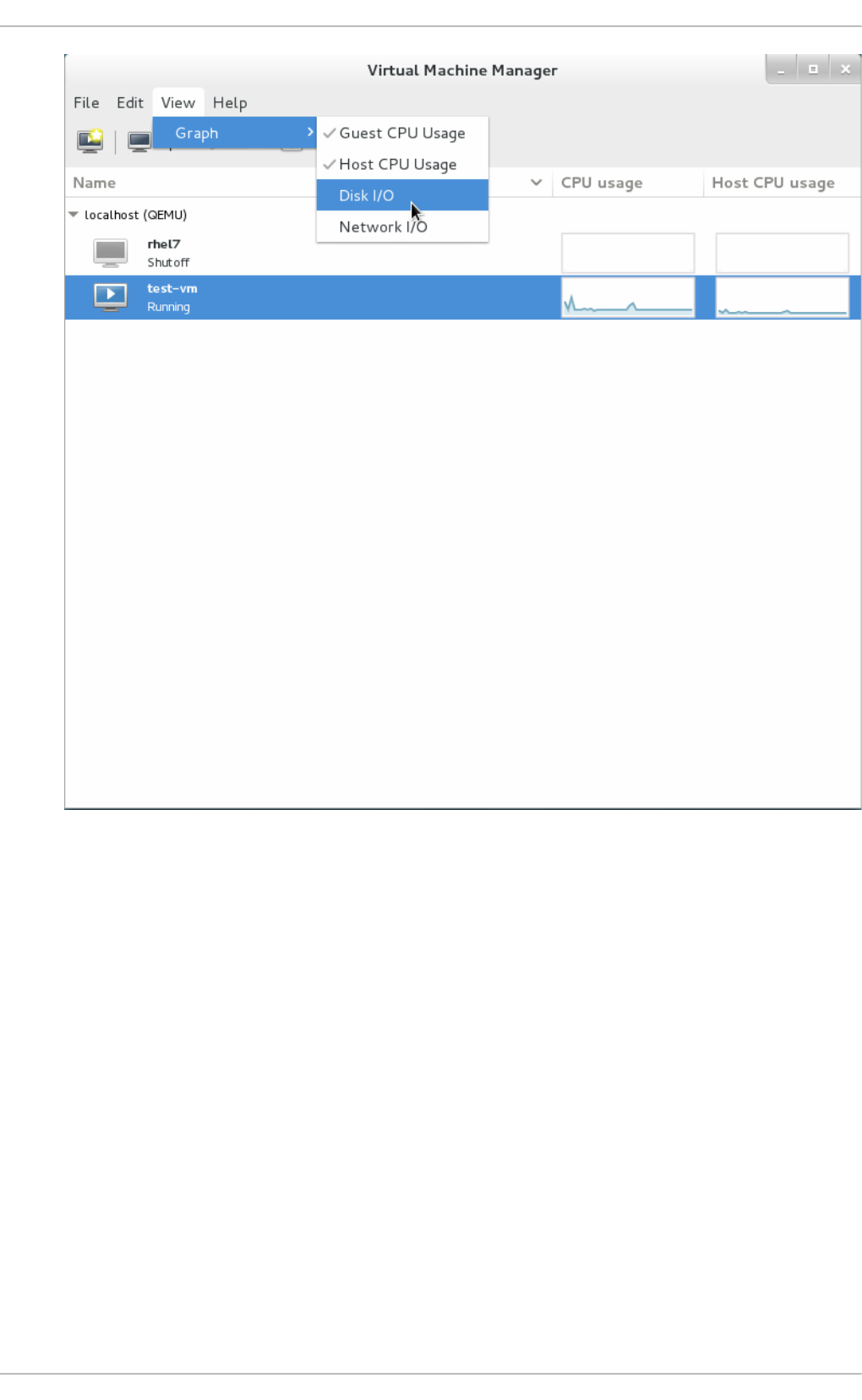

- 22.8. Displaying CPU usage for guests

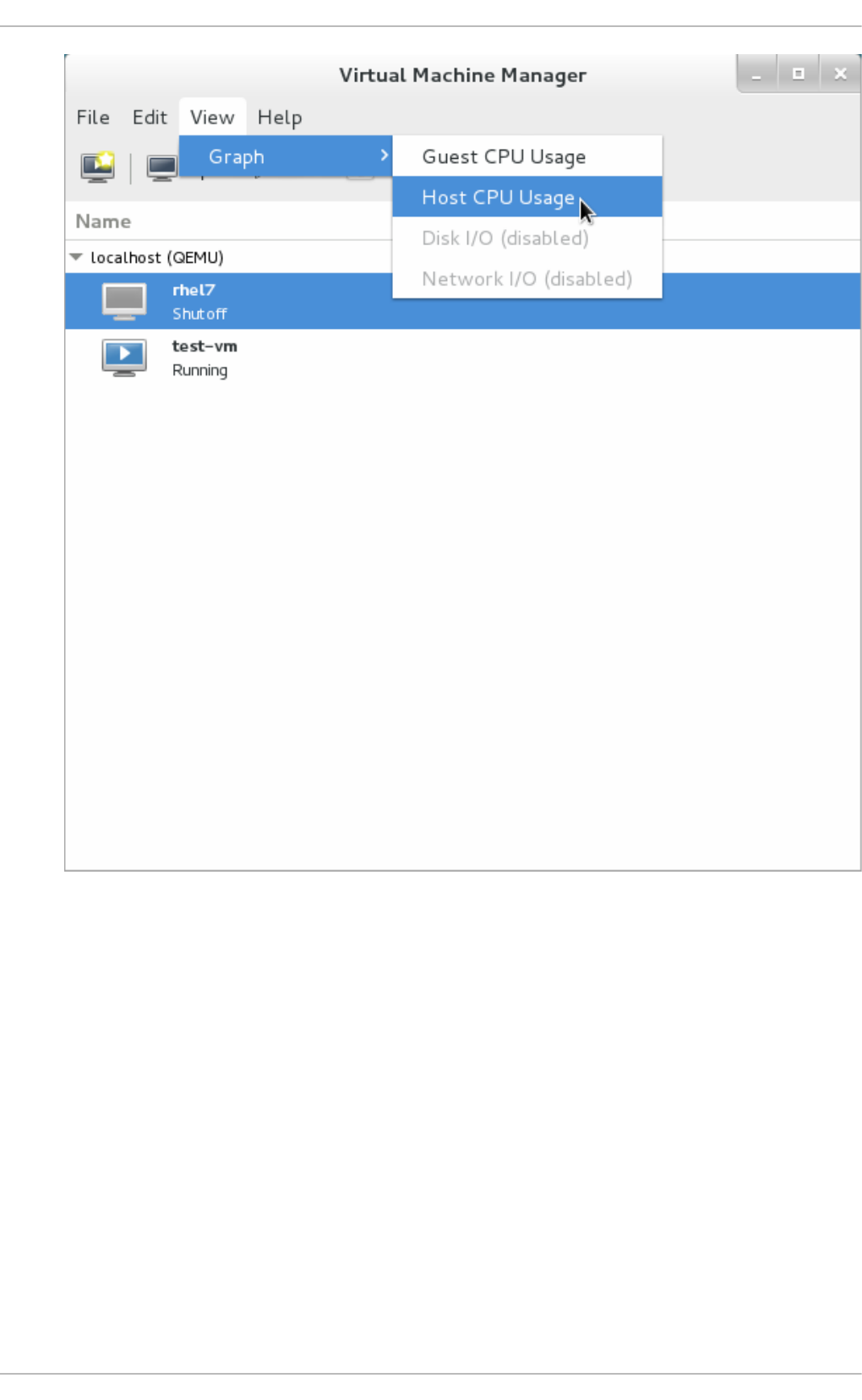

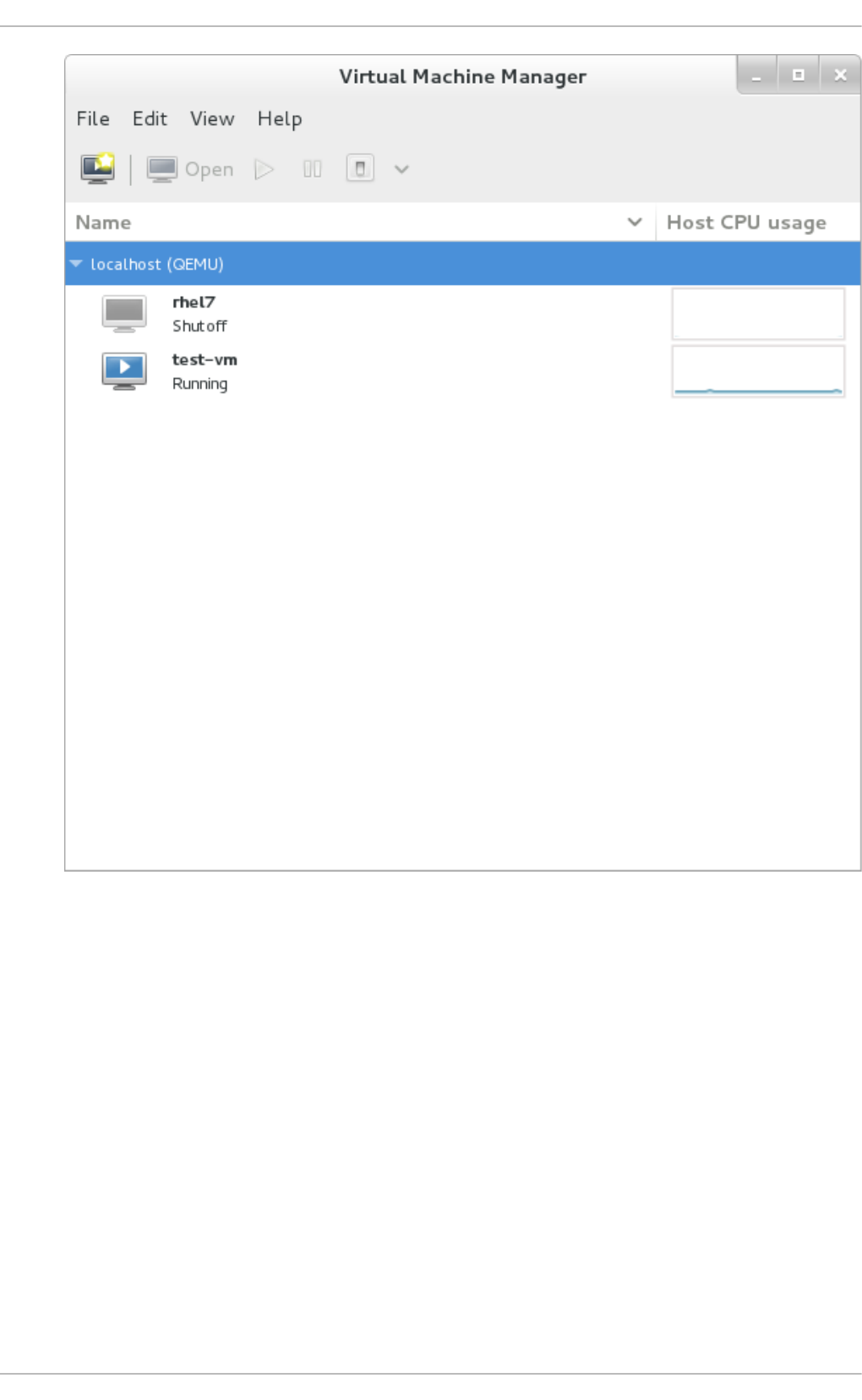

- 22.9. Displaying CPU usage for hosts

- 22.10. Displaying Disk I/O

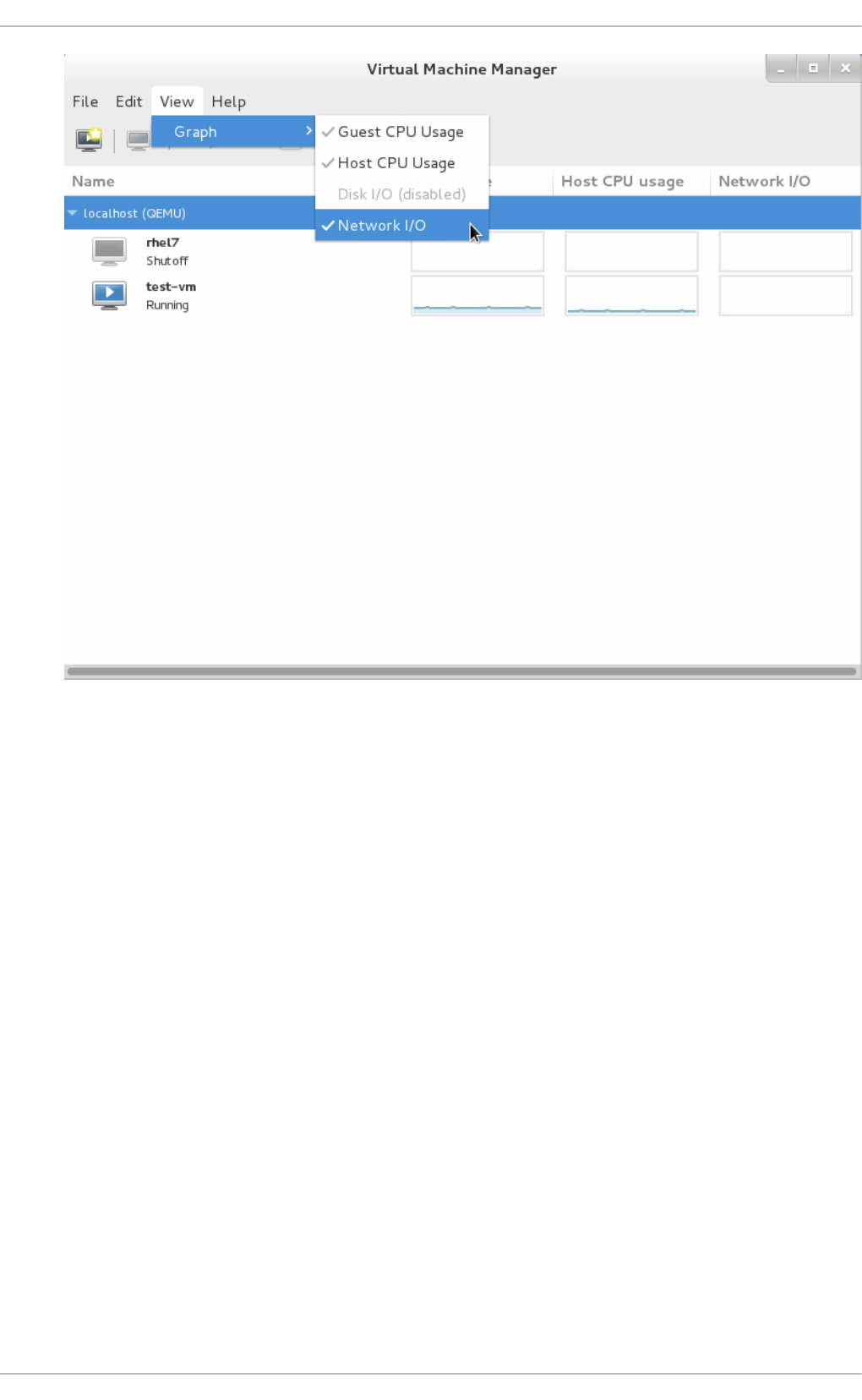

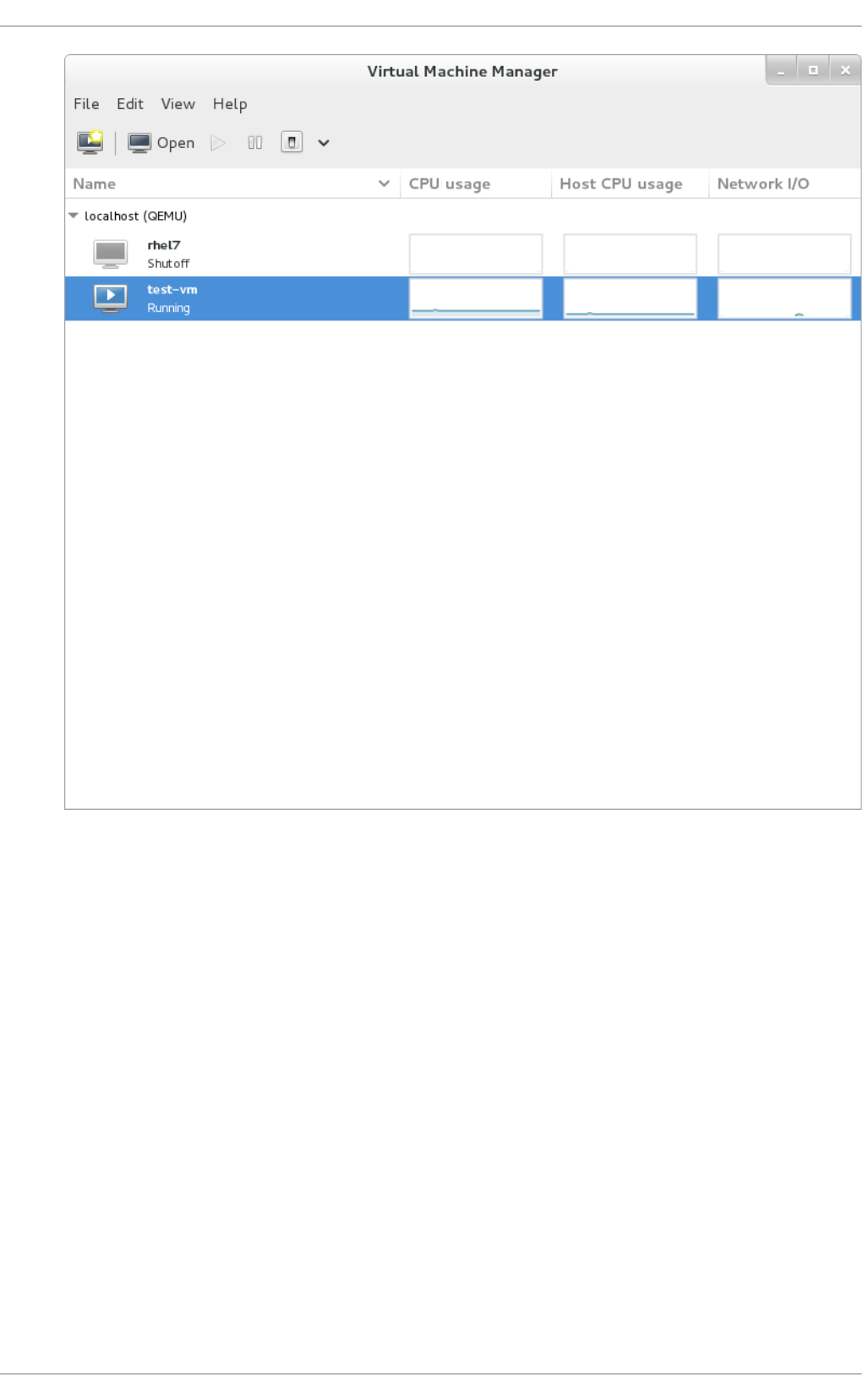

- 22.11. Displaying Network I/O

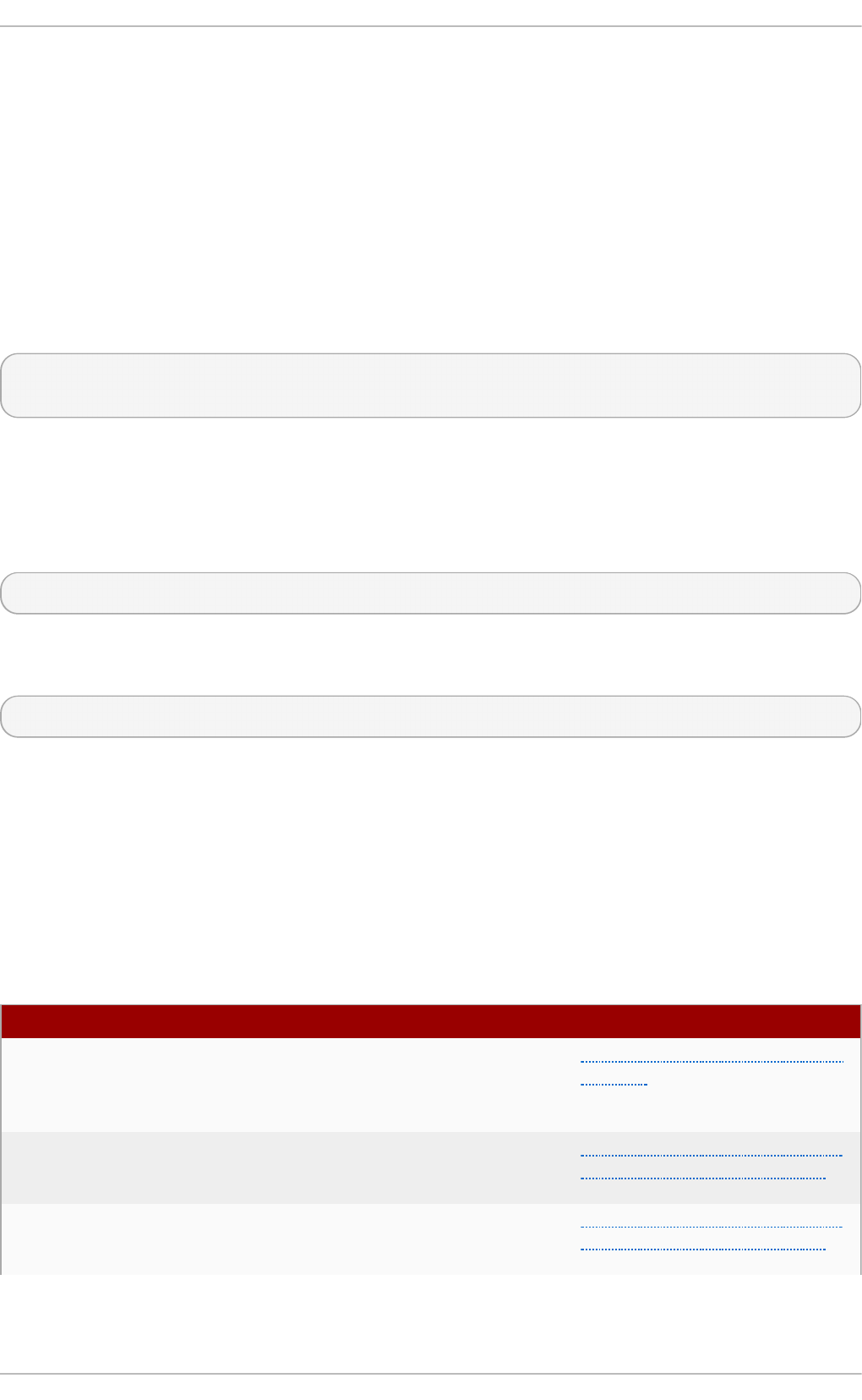

- Chapter 23. Managing guest virtual machines with virsh

- 23.1. Guest virtual machine states

- 23.2. Running the virsh program

- 23.3. Interactive mode commands

- 23.4. Displaying the virsh version

- 23.5. Getting help

- 23.6. Sending commands with echo

- 23.7. Connecting to the hypervisor with virsh connect

- 23.8. Displaying information about guest virtual machine

- 23.9. Guest virtual machine basic commands

- 23.9.1. Starting a virtual machine

- 23.9.2. Configuring a virtual machine to be started automatically at boot

- 23.9.3. Suspending a guest virtual machine

- 23.9.4. Suspending a running guest virtual machine

- 23.9.5. Waking up a guest virtual machine from pmsuspend state

- 23.9.6. Undefining a domain

- 23.9.7. Resuming a guest virtual machine

- 23.9.8. Saving a guest virtual machine

- 23.9.9. Updating the domain XML file that will be used for restoring a guest virtual machine

- 23.9.10. Extracting the domain XML file

- 23.9.11. Editing the guest virtual machine configuration files

- 23.9.12. Restoring a guest virtual machine

- 23.10. Shutting down, rebooting, and forcing a shutdown of a guest virtual machine

- 23.10.1. Shutting down a guest virtual machine

- 23.10.2. Shutting down Red Hat Enterprise Linux 6 guests on a Red Hat Enterprise Linux 7 host

- 23.10.3. Manipulating the libvirt-guests configuration settings

- 23.10.4. Rebooting a guest virtual machine

- 23.10.5. Forcing a guest virtual machine to stop

- 23.10.6. Resetting a virtual machine

- 23.10.7. Connecting the serial console for the guest virtual machine

- 23.10.8. Defining a guest virtual machine with an XML file

- 23.10.9. Injecting NMI

- 23.10.10. Displaying device block statistics

- 23.10.11. Retrieving network statistics

- 23.10.12. Modifying the link state of a guest virtual machine's virtual interface

- 23.10.13. Listing the link state of a guest virtual machine's virtual interface

- 23.10.14. Setting network interface bandwidth parameters

- 23.10.15. Retrieving memory statistics for a running guest virtual machine

- 23.10.16. Displaying errors on block devices

- 23.10.17. Displaying the block device size

- 23.10.18. Displaying the block devices associated with a domain

- 23.10.19. Displaying virtual interfaces associated with a guest virtual machine

- 23.10.20. Using blockcommit to shorten a backing chain

- 23.10.21. Using blockpull to shorten a backing chain

- 23.10.22. Using blockresize to change the size of a guest virtual machine path

- 23.10.23. Disk image management with live block copy

- 23.10.24. Displaying a URI for connection to a graphical display

- 23.10.25. Discarding blocks not in use

- 23.10.26. Guest virtual machine retrieval commands

- 23.10.27. Converting QEMU arguments to domain XML

- 23.10.28. Creating a dump file of a guest virtual machine's core

- 23.10.29. Creating a virtual machine XML dump (configuration file)

- 23.10.30. Creating a guest virtual machine from a configuration file

- 23.11. Editing a guest virtual machine's configuration file

- 23.11.1. Adding multifunction PCI devices to KVM guest virtual machines

- 23.11.2. Stopping a running guest virtual machine in order to restart it later

- 23.11.3. Displaying CPU statistics for a specified guest virtual machine

- 23.11.4. Saving a screenshot

- 23.11.5. Sending a keystroke combination to a specified guest virtual machine

- 23.11.6. Sending process signal names to virtual processes

- 23.11.7. Displaying the IP address and port number for the VNC display

- 23.12. NUMA node management

- 23.12.1. Displaying node information

- 23.12.2. Setting NUMA parameters

- 23.12.3. Displaying the amount of free memory in a NUMA cell

- 23.12.4. Displaying a CPU list

- 23.12.5. Displaying CPU statistics

- 23.12.6. Managing devices

- 23.12.7. Suspending the host physical machine

- 23.12.8. Setting and displaying the node memory parameters

- 23.12.9. Creating devices on host nodes

- 23.12.10. Detaching a node device

- 23.12.11. Dump a Device

- 23.12.12. List devices on a node

- 23.12.13. Triggering a reset for a node

- 23.13. Retrieving guest virtual machine information

- 23.14. Storage pool commands

- 23.15. Storage Volume Commands

- 23.16. Displaying per-guest virtual machine information

- 23.16.1. Displaying the guest virtual machines

- 23.16.2. Displaying virtual CPU information

- 23.16.3. Pinning vCPU to a host physical machine's CPU

- 23.16.4. Displaying information about the virtual CPU counts of a given domian

- 23.16.5. Configuring virtual CPU affinity

- 23.16.6. Configuring virtual CPU count

- 23.16.7. Configuring memory allocation

- 23.16.8. Changing the memory allocation for the domain

- 23.16.9. Displaying guest virtual machine block device information

- 23.16.10. Displaying guest virtual machine network device information

- 23.17. Managing virtual networks

- 23.17.1. Autostarting a virtual network

- 23.17.2. Creating a virtual network from an XML file

- 23.17.3. Defining a virtual network from an XML file

- 23.17.4. Stopping a virtual network

- 23.17.5. Creating a dump file

- 23.17.6. Eding a virtual network's XML configuration file

- 23.17.7. Getting information about a virtual network

- 23.17.8. Listing information about a virtual network

- 23.17.9. Converting a network UUID to network name

- 23.17.10. Starting a (previously defined) inactive network

- 23.17.11. Undefining the configuration for an inactive network

- 23.17.12. Converting a network name to network UUID

- 23.17.13. Updating an existing network definition file

- 23.17.14. Migrating guest virtual machines with virsh

- 23.17.15. Setting a static IP address for the guest virtual machine

- 23.18. Interface Commands

- 23.18.1. Defining and starting a host physical machine interface via an XML file

- 23.18.2. Editing the XML configuration file for the host interface

- 23.18.3. Listing active host interfaces

- 23.18.4. Converting a MAC address into an interface name

- 23.18.5. Stopping a specific host physical machine interface

- 23.18.6. Displaying the host configuration file

- 23.18.7. Creating bridge devices

- 23.18.8. Tearing down a bridge device

- 23.18.9. Manipulating interface snapshots

- 23.19. Managing snapshots

- 23.19.1. Creating Snapshots

- 23.19.2. Creating a snapshot for the current guest virtual machine

- 23.19.3. Taking a snapshot of the current guest virtual machine

- 23.19.4. snapshot-edit-domain

- 23.19.5. snapshot-info-domain

- 23.19.6. snapshot-list-domain

- 23.19.7. snapshot-dumpxml domain snapshot

- 23.19.8. snapshot-parent guest virtual machine

- 23.19.9. snapshot-revert guest virtual machine

- 23.19.10. snapshot-delete domain

- 23.20. Guest virtual machine CPU model configuration

- 23.21. Configuring the guest virtual machine CPU model

- 23.22. Managing resources for guest virtual machines

- 23.23. Setting schedule parameters

- 23.24. Disk I/O throttling

- 23.25. Display or set block I/O parameters

- 23.26. Configuring memory Tuning

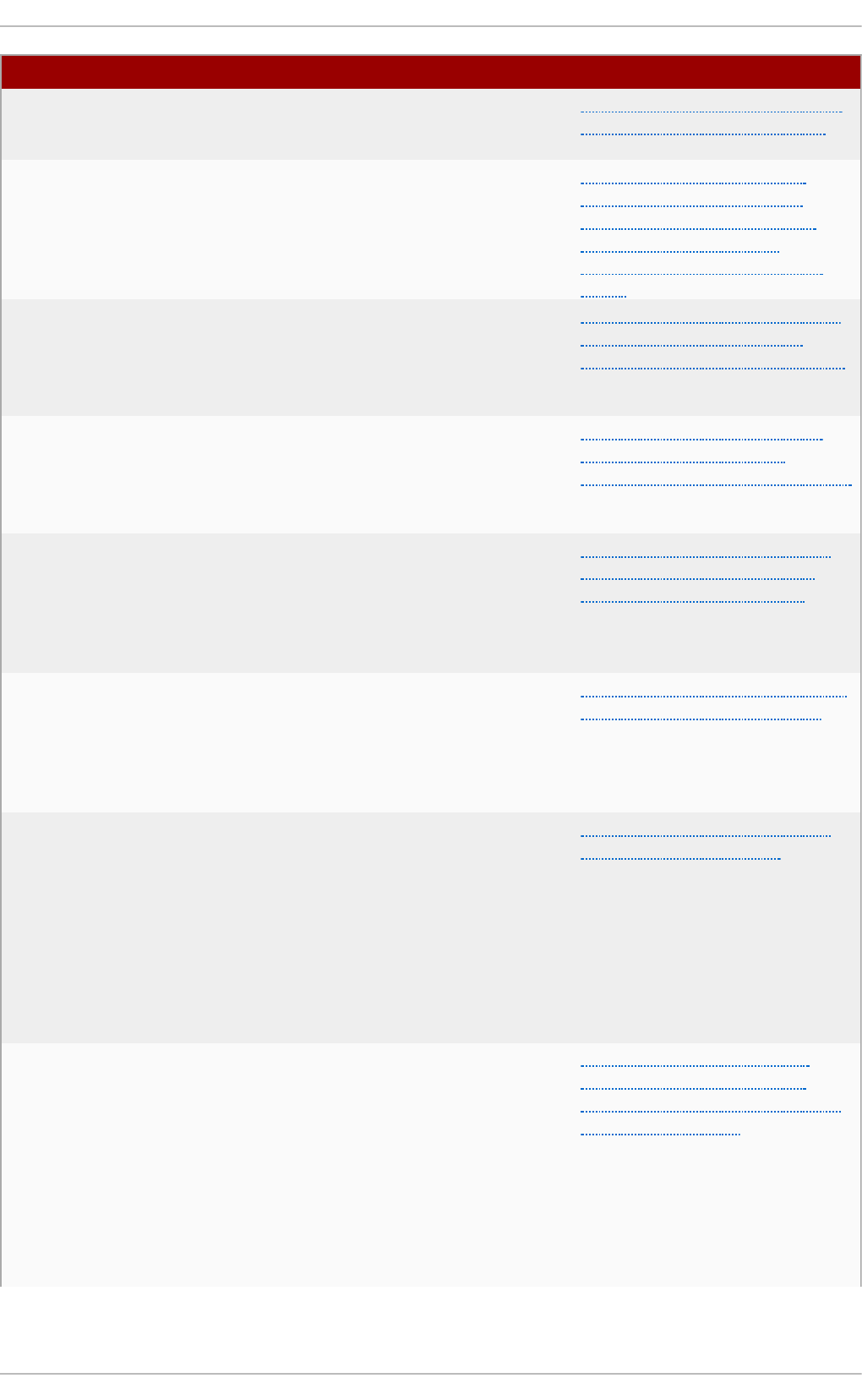

- Chapter 24. Guest virtual machine disk access with offline tools

- 24.1. Introduction

- 24.2. Terminology

- 24.3. Installation

- 24.4. The guestfish shell

- 24.5. Other commands

- 24.6. virt-rescue: The rescue shell

- 24.7. virt-df: Monitoring disk usage

- 24.8. virt-resize: resizing guest virtual machines offline

- 24.9. virt-inspector: inspecting guest virtual machines

- 24.10. virt-win-reg: Reading and editing the Windows Registry

- 24.11. Using the API from Programming Languages

- 24.12. virt-sysprep: resetting virtual machine settings

- Chapter 25. Graphic User Interface tools for guest virtual machine management

- Chapter 26. Manipulating the domain XML

- 26.1. General information and meta-data

- 26.2. Operating system booting

- 26.3. SMBIOS system information

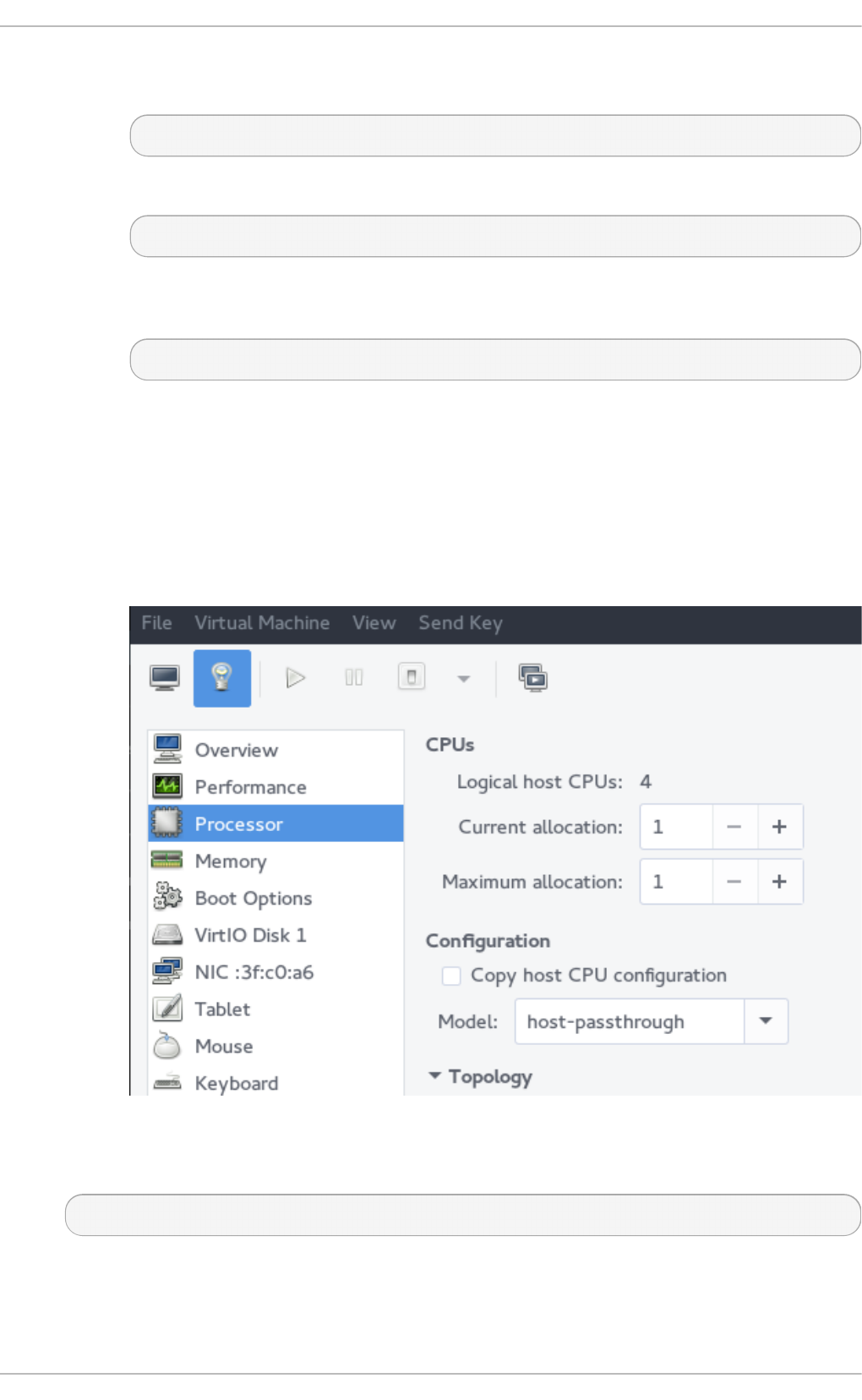

- 26.4. CPU allocation

- 26.5. CPU tuning

- 26.6. Memory backing

- 26.7. Memory tuning

- 26.8. Memory allocation

- 26.9. NUMA node tuning

- 26.10. Block I/O tuning

- 26.11. Resource partitioning

- 26.12. CPU models and topology

- 26.13. Events configuration

- 26.14. Power Management

- 26.15. Hypervisor features

- 26.16. Time keeping

- 26.17. Timer element attributes

- 26.18. Devices

- 26.18.1. Hard drives, floppy disks, CD-ROMs

- 26.18.2. Files ystems

- 26.18.3. Device addresses

- 26.18.4. Controllers

- 26.18.5. Device leases

- 26.18.6. Host physical machine device assignment

- 26.18.7. Redirected devices

- 26.18.8. Smartcard devices

- 26.18.9. Network interfaces

- 26.18.9.1. Virtual networks

- 26.18.9.2. Bridge to LAN

- 26.18.9.3. Setting a port masquerading range

- 26.18.9.4. User space SLIRP stack

- 26.18.9.5. Generic Ethernet connection

- 26.18.9.6. Direct attachment to physical interfaces

- 26.18.9.7. PCI passthrough

- 26.18.9.8. Multicast tunnel

- 26.18.9.9. TCP tunnel

- 26.18.9.10. Setting NIC driver-specific options

- 26.18.9.11. Overriding the target element

- 26.18.9.12. Specifying boot order

- 26.18.9.13. Interface ROM BIOS configuration

- 26.18.9.14. Quality of service (QoS)

- 26.18.9.15. Setting VLAN tag (on supported network types only)

- 26.18.9.16. Modifying virtual link state

- 26.18.10. Input devices

- 26.18.11. Hub devices

- 26.18.12. Graphical framebuffers

- 26.18.13. Video devices

- 26.18.14. Consoles, serial, and channel devices

- 26.18.15. Guest virtual machine interfaces

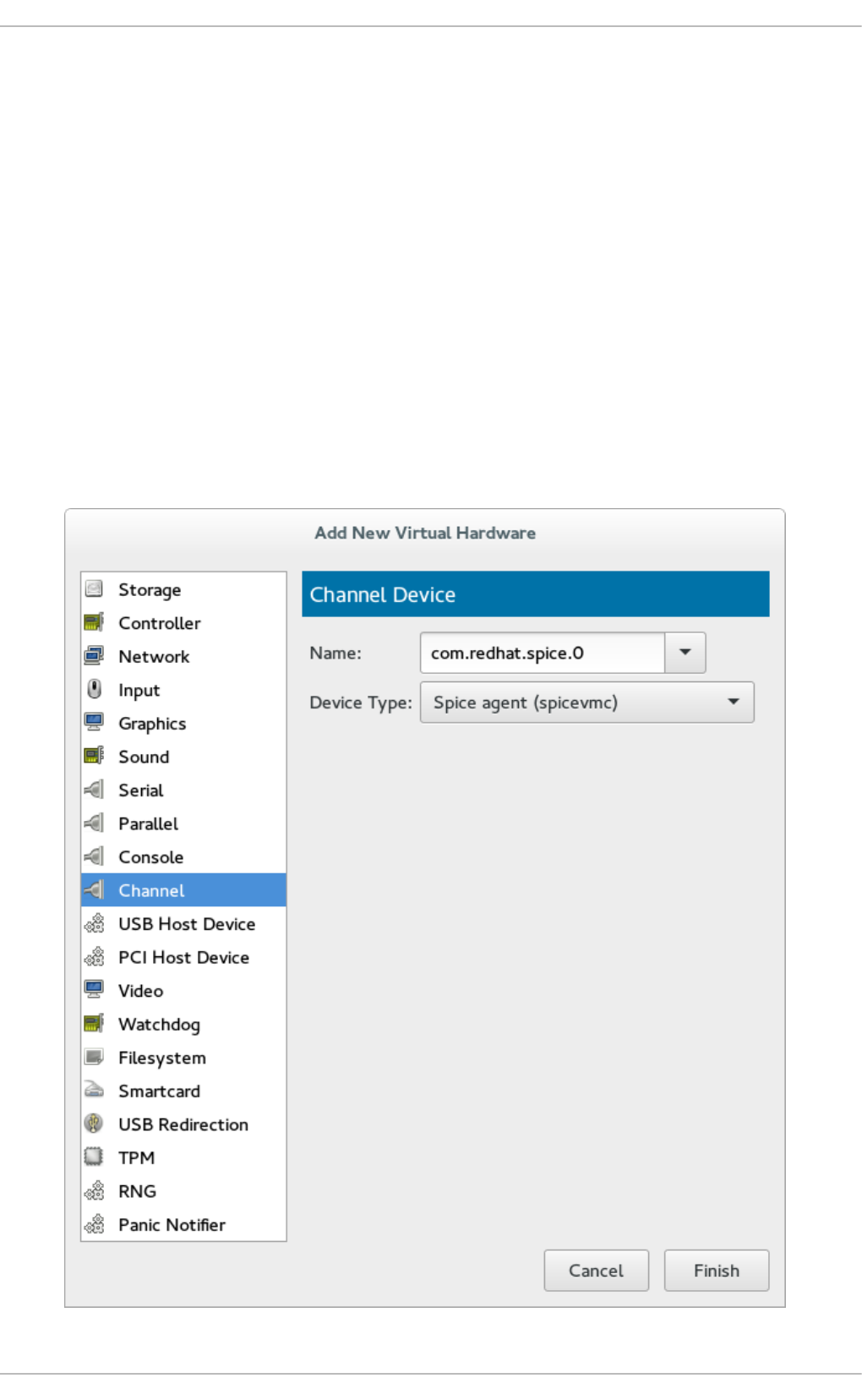

- 26.18.16. Channel

- 26.18.17. Host physical machine interface

- 26.18.18. Sound devices

- 26.18.19. Watchdog device

- 26.18.20. Setting a panic device

- 26.18.21. Memory balloon device

- 26.18.22. TPM devices

- 26.19. Storage pools

- 26.20. Storage Volumes

- 26.21. Security label

- 26.22. A Sample configuration file

- Part III. Appendices

- Appendix A. Troubleshooting

- A.1. Debugging and troubleshooting tools

- A.2. Preparing for disaster recovery

- A.3. Creating virsh dump files

- A.4. Capturing trace data on a constant basis using the Systemtap flight recorder

- A.5. kvm_stat

- A.6. Troubleshooting with serial consoles

- A.7. Virtualization log files

- A.8. Loop device errors

- A.9. Live Migration Errors

- A.10. Enabling Intel VT-x and AMD-V virtualization hardware extensions in BIOS

- A.11. Generating a new unique MAC address

- A.12. KVM networking performance

- A.13. Workaround for creating external snapshots with libvirt

- A.14. Missing characters on guest console with Japanese keyboard

- A.15. Guest virtual machine fails to shutdown

- A.16. Disable SMART disk monitoring for guest virtual machines

- A.17. libguestfs troubleshooting

- A.18. Common libvirt errors and troubleshooting

- A.18.1. libvirtd failed to start

- A.18.2. The URI failed to connect to the hypervisor

- A.18.3. The guest virtual machine cannot be started: internal error guest CPU is not compatible with host CPU

- A.18.4. Guest starting fails with error: monitor socket did not show up

- A.18.5. Internal error cannot find character device (null)

- A.18.6. Guest virtual machine booting stalls with error: No boot device

- A.18.7. Virtual network default has not been started

- A.18.8. PXE boot (or DHCP) on guest failed

- A.18.9. Guest can reach outside network, but cannot reach host when using macvtap interface

- A.18.10. Could not add rule to fixup DHCP response checksums on network 'default'

- A.18.11. Unable to add bridge br0 port vnet0: No such device

- A.18.12. Guest is unable to start with error: warning: could not open /dev/net/tun

- A.18.13. Migration fails with Error: unable to resolve address

- A.18.14. Migration fails with Unable to allow access for disk path: No such file or directory

- A.18.15. No guest virtual machines are present when libvirtd is started

- A.18.16. Unable to connect to server at 'host:16509': Connection refused ... error: failed to connect to the hypervisor

- A.18.17. Common XML errors

- Appendix B. Virtualization restrictions

- Appendix C. Additional resources

- Appendix D. Working with IOMMU Groups [1]

- Appendix E. NetKVM Driver Parameters

- Appendix F. Revision History

Laura Novich Dayle Parker Scott Radvan

Tahlia Richardson

Red Hat Enterprise Linux 7

Virtualization Deployment and

Administration Guide

Installing, configuring, and managing virtual machines on a Red Hat

Enterprise Linux physical machine

Red Hat Enterprise Linux 7 Virtualization Deployment and Administration

Guide

Installing, configuring, and managing virtual machines on a Red Hat

Enterprise Linux physical machine

Laura Novich

Red Hat Customer Content Services

lno vich@redhat.co m

Dayle Parker

Red Hat Customer Content Services

dayleparker@redhat.com

Sco tt Radvan

Red Hat Customer Content Services

sradvan@redhat.co m

Tahlia Richardson

Red Hat Customer Content Services

trichard@redhat.com

Legal Notice

Co pyright © 2015 Red Hat, Inc.

This do cument is licensed by Red Hat under the Creative Commons Attribution-ShareAlike 3.0

Unpo rted License. If yo u distribute this do cument, or a mo dified version of it, you must pro vide

attributio n to Red Hat, Inc. and provide a link to the original. If the document is mo dified, all Red

Hat trademarks must be remo ved.

Red Hat, as the licenso r o f this document, waives the right to enforce, and agrees not to assert,

Sectio n 4d of CC-BY-SA to the fullest extent permitted by applicable law.

Red Hat, Red Hat Enterprise Linux, the Shadowman lo go, JBoss, MetaMatrix, Fedora, the Infinity

Logo , and RHCE are trademarks of Red Hat, Inc., registered in the United States and other

countries.

Linux ® is the registered trademark of Linus To rvalds in the United States and other co untries.

Java ® is a registered trademark of Oracle and/or its affiliates.

XFS ® is a trademark of Silicon Graphics Internatio nal Corp. o r its subsidiaries in the United

States and/o r o ther countries.

MySQL ® is a registered trademark of MySQL AB in the United States, the Euro pean Unio n and

other countries.

No de.js ® is an official trademark of Jo yent. Red Hat So ftware Collections is no t fo rmally

related to or endo rsed by the official Jo yent No de.js o pen source or co mmercial project.

The OpenStack ® Word Mark and OpenStack Logo are either registered trademarks/service

marks or trademarks/service marks o f the OpenStack Foundatio n, in the United States and o ther

countries and are used with the OpenStack Foundatio n's permissio n. We are no t affiliated with,

endo rsed or spo nsored by the OpenStack Foundatio n, o r the OpenStack community.

All other trademarks are the property o f their respective o wners.

Abstract

This guide covers ho w to co nfigure a Red Hat Enterprise Linux 7 ho st physical machine and

how to install and configure guest virtual machines using the KVM hyperviso r. Other to pics

include PCI device configuration, SR-IOV, networking, sto rage, device and guest virtual machine

management, as well as troubleshooting, co mpatibility and restrictions. To expand your

expertise, you might also be interested in the Red Hat Enterprise Virtualizatio n (RH318) training

course.

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

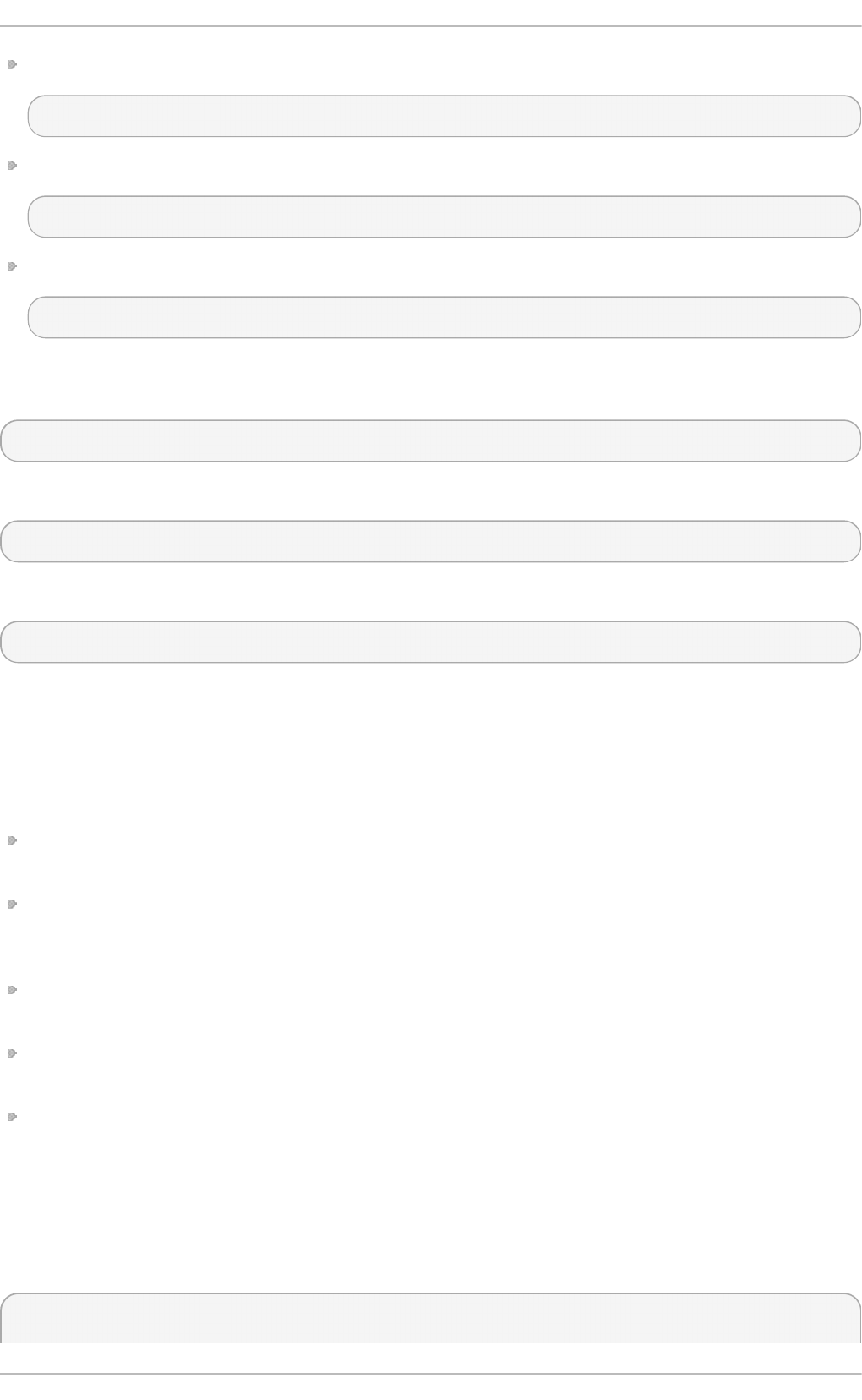

Table of Contents

Part I. Deployment

Chapt er 1 . Syst em requirement s

1.1. Ho st sys tem req uirements

1.2. KVM hyp erviso r req uirements

1.3. KVM g ues t virtual machine co mp atib ility

1.4. Sup p o rted g ues t CPU mo d els

Chapt er 2 . Inst alling t he virt ualiz at ion packages

2.1. Co nfig uring a virtualizatio n ho st d uring a new Red Hat Enterp rise Linux 7 installatio n

2.2. Installing virtualizatio n p ac kag es o n an exis ting Red Hat Enterp rise Linux system

2.3. Reg istering the hyp erviso r and virtual mac hine

Chapt er 3. Inst alling a virt ual machine

3.1. G ues t virtual machine p rereq uisites and c o ns id eratio ns

3.2. Creating g uests with virt-ins tall

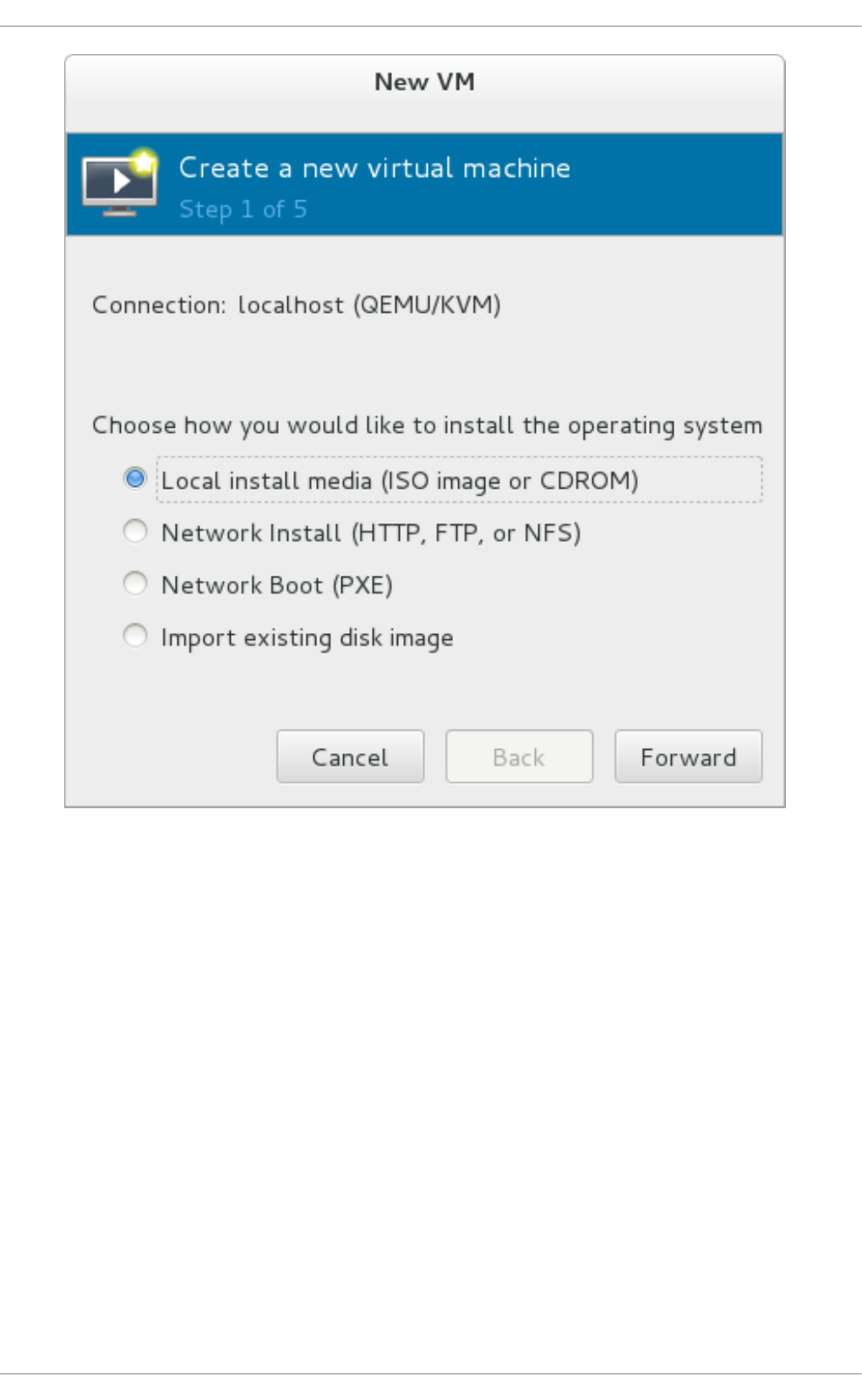

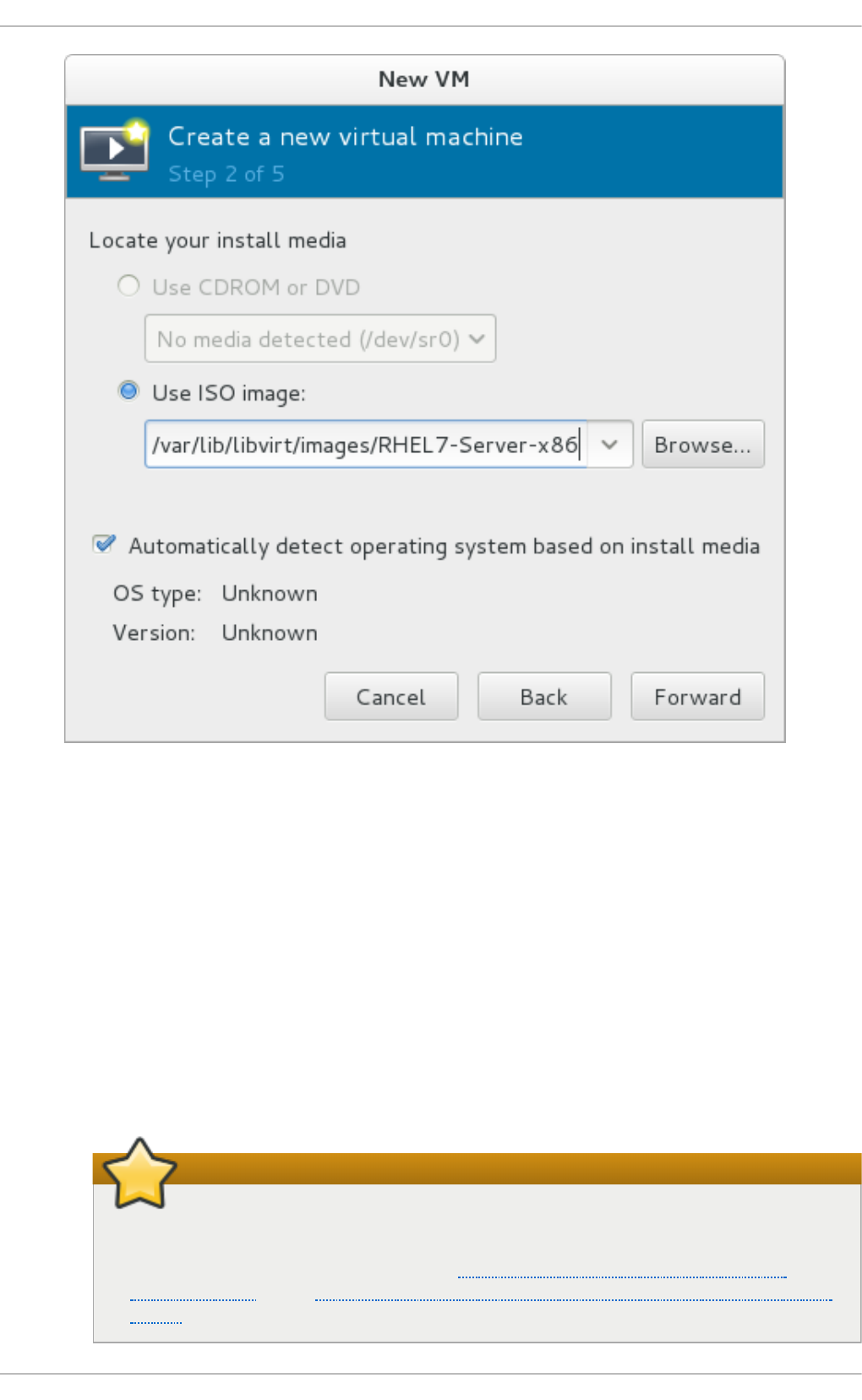

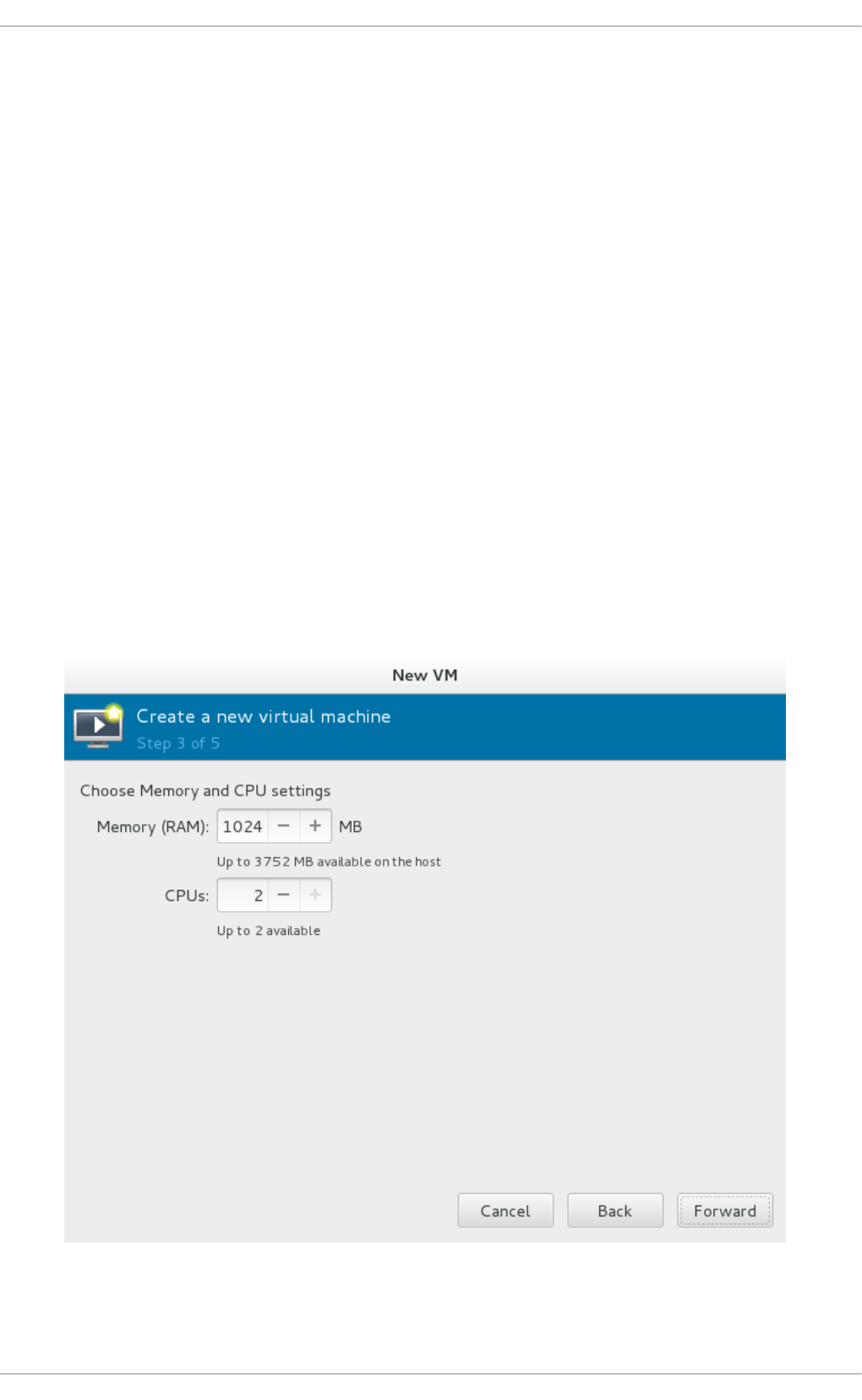

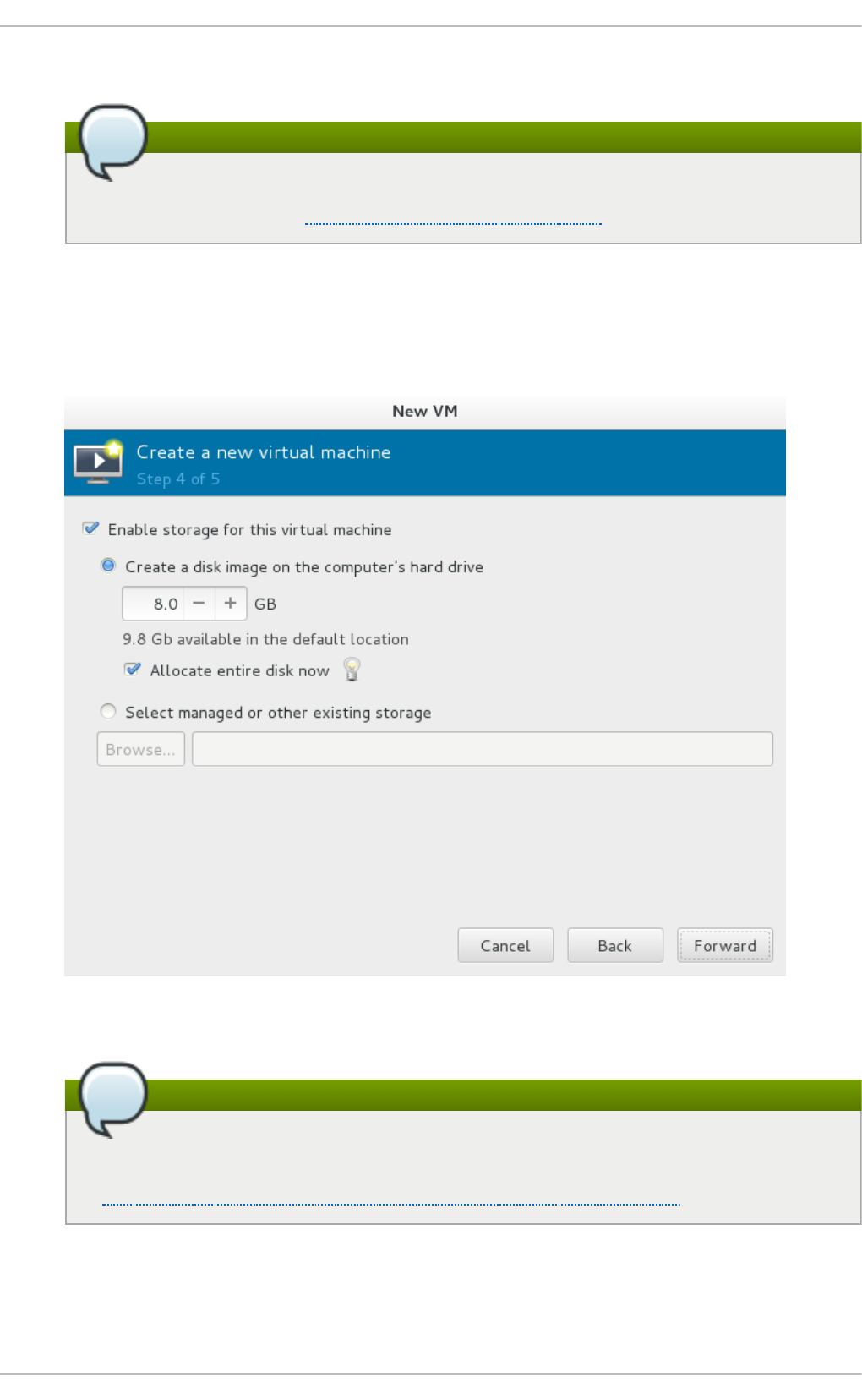

3.3. Creating g uests with virt-manag er

3.4. Co mp aris o n o f virt-install and virt-manag er installatio n o p tio ns

Chapt er 4 . Virt ualiz ing Red Hat Ent erprise Linux o n O t her Plat forms

4.1. O n VMware ESX

4.2. O n Hyp er-V

Chapt er 5. Inst alling a fully- virt ualized Windows guest

5.1. Using virt-ins tall to c reate a g ues t

5.2. Tip s fo r mo re effic iency with Wind o ws g ues t virtual machines

Chapt er 6 . KVM Para- virt ualiz ed (virt io) Drivers

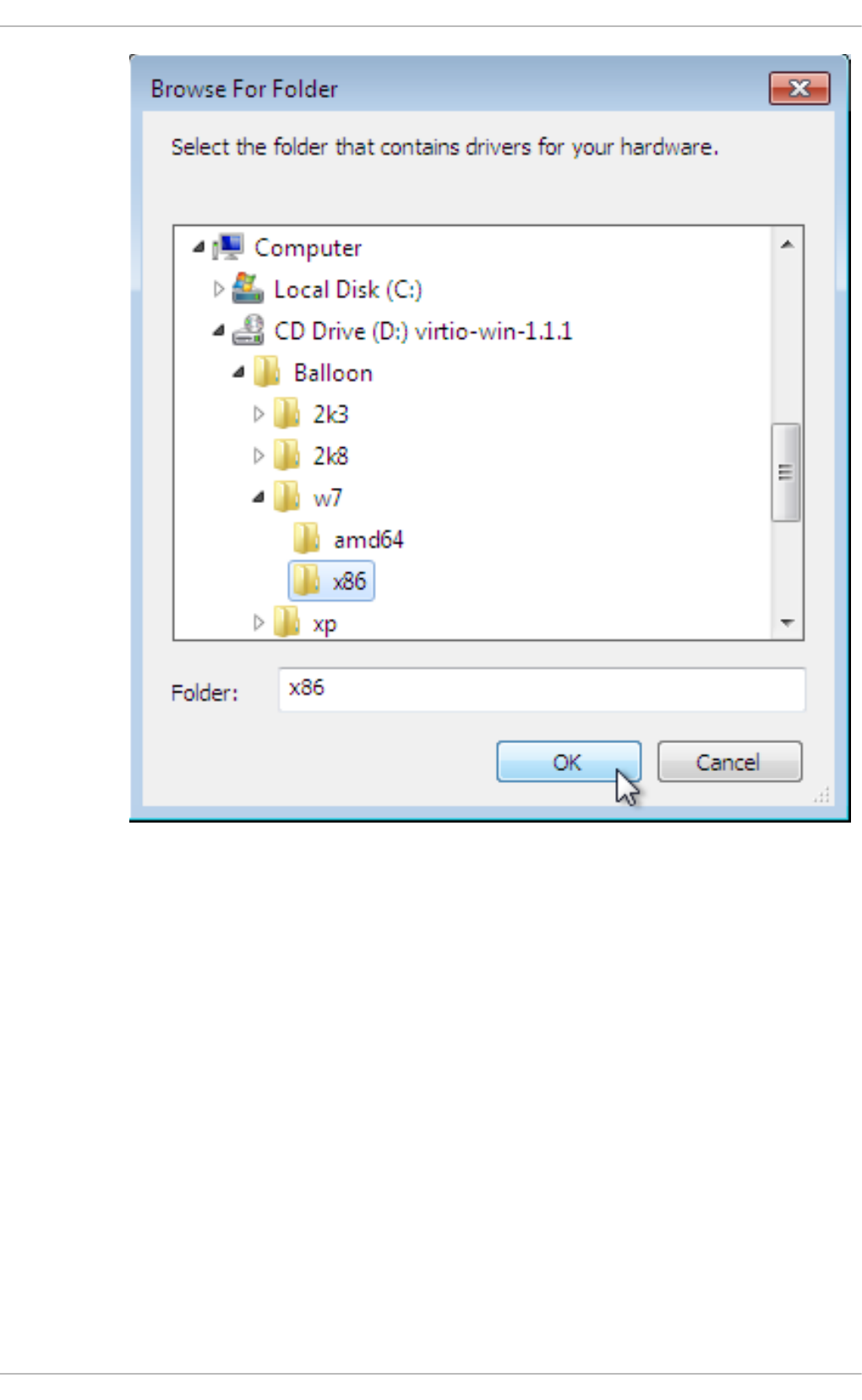

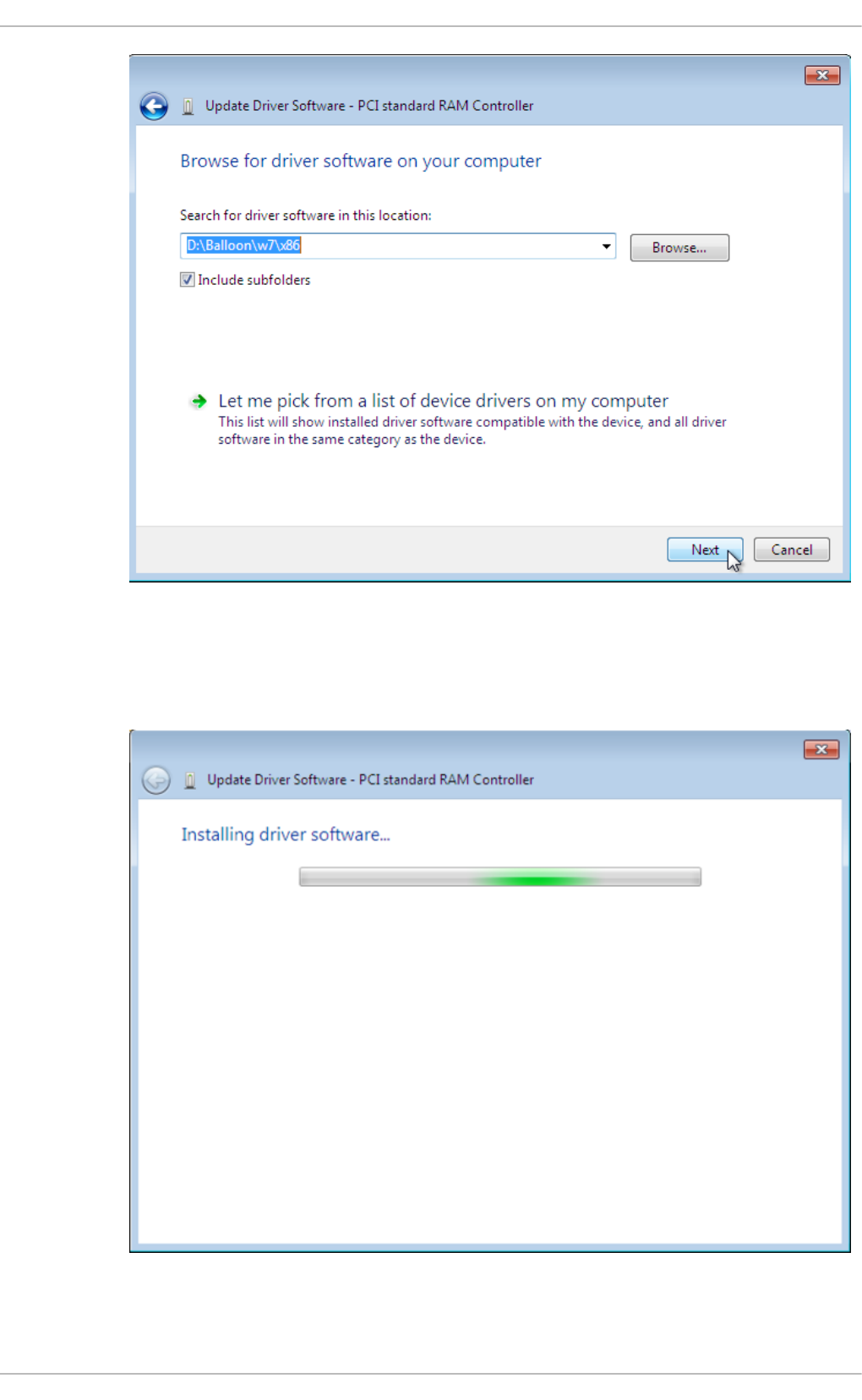

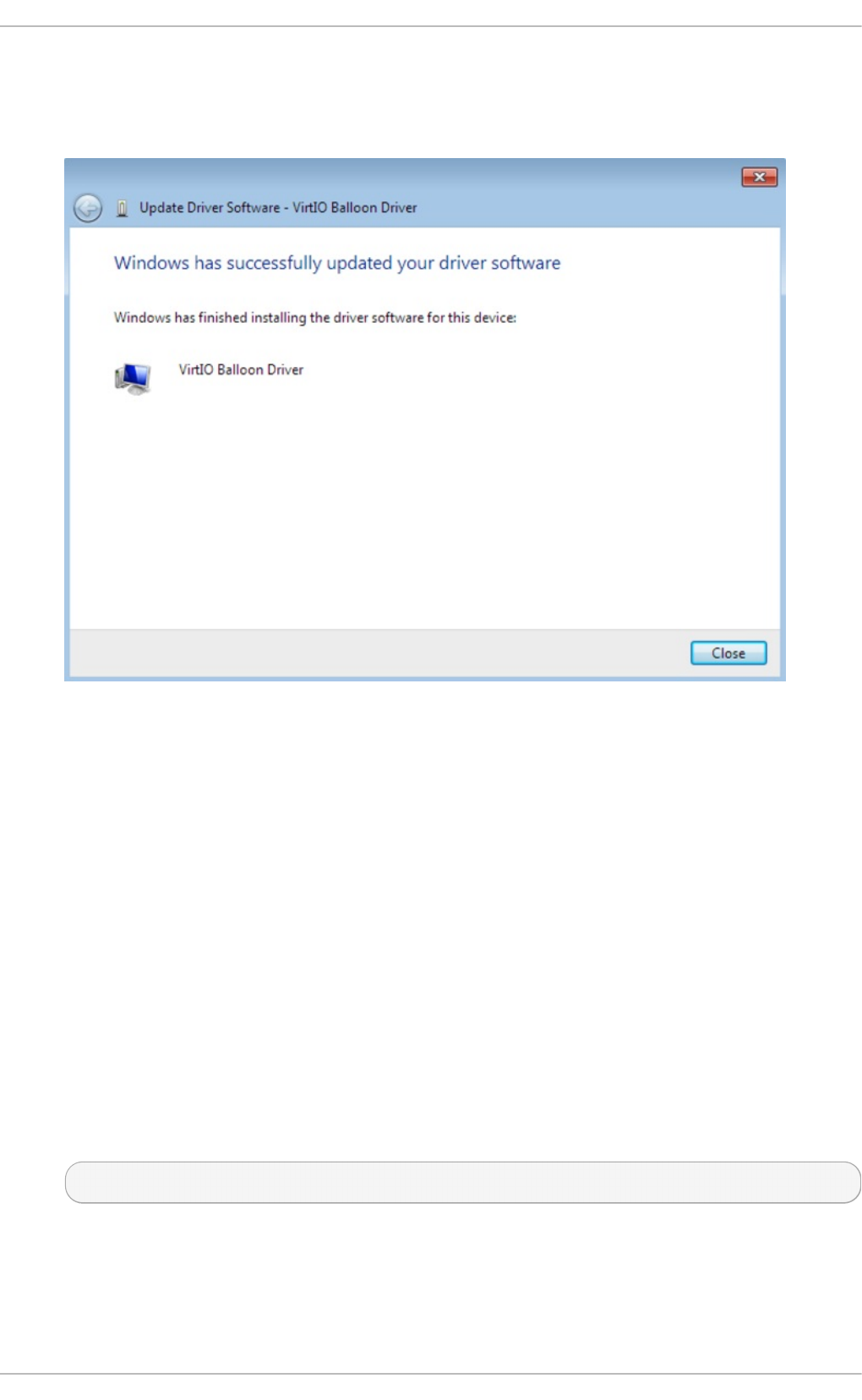

6 .1. Installing the KVM Wind o ws virtio d rivers

6 .2. Installing the d rivers o n an ins talled Wind o ws g uest virtual mac hine

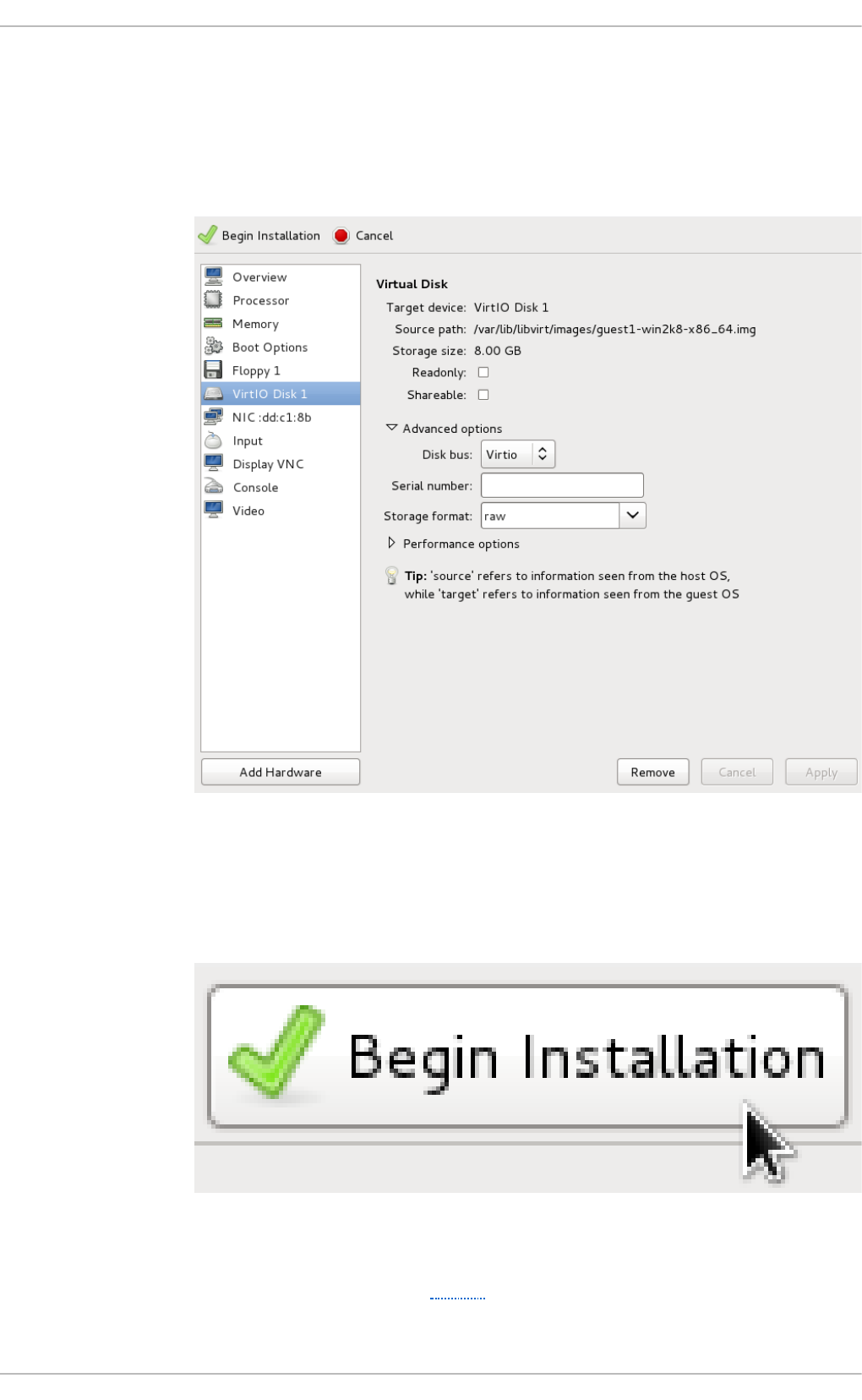

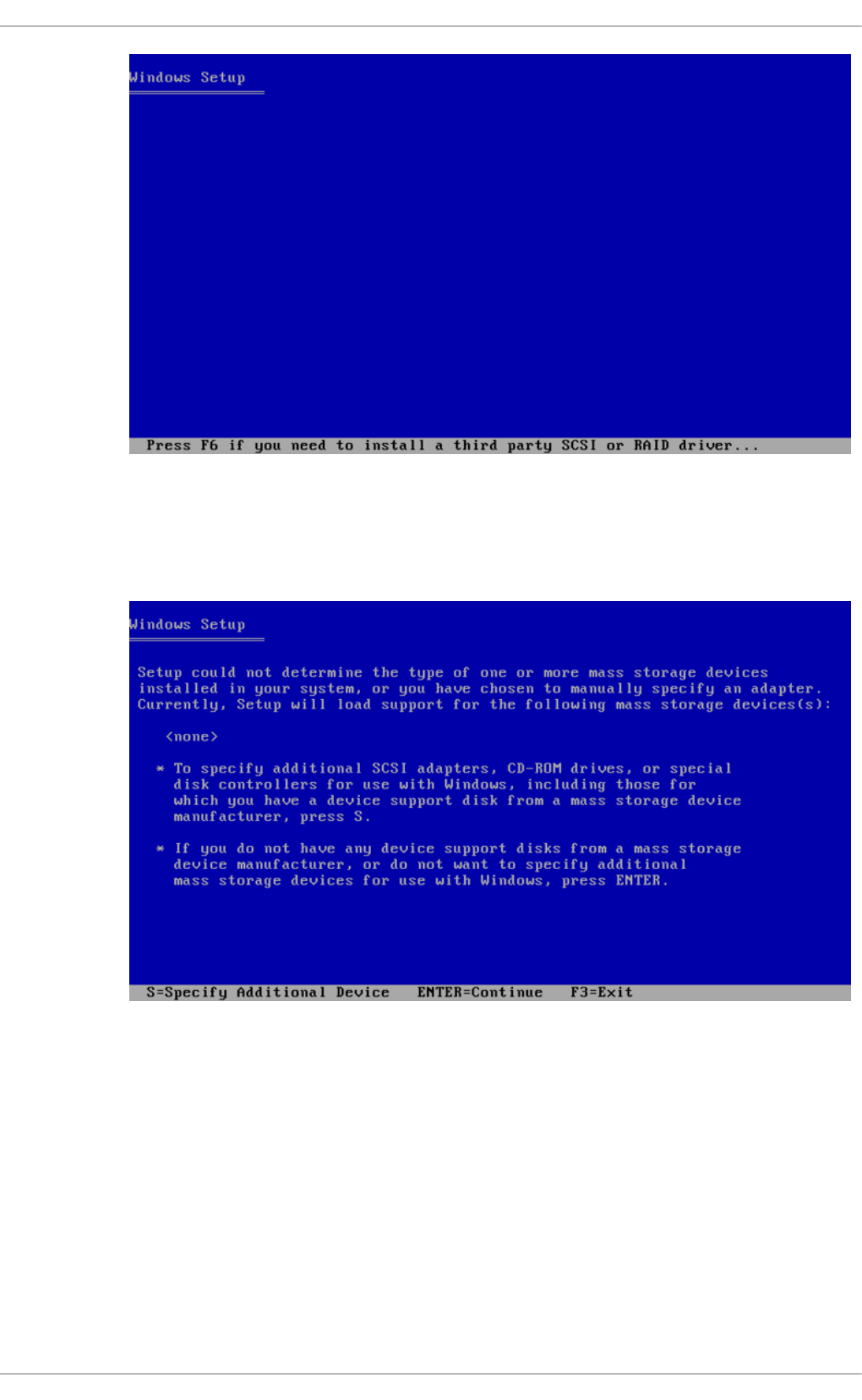

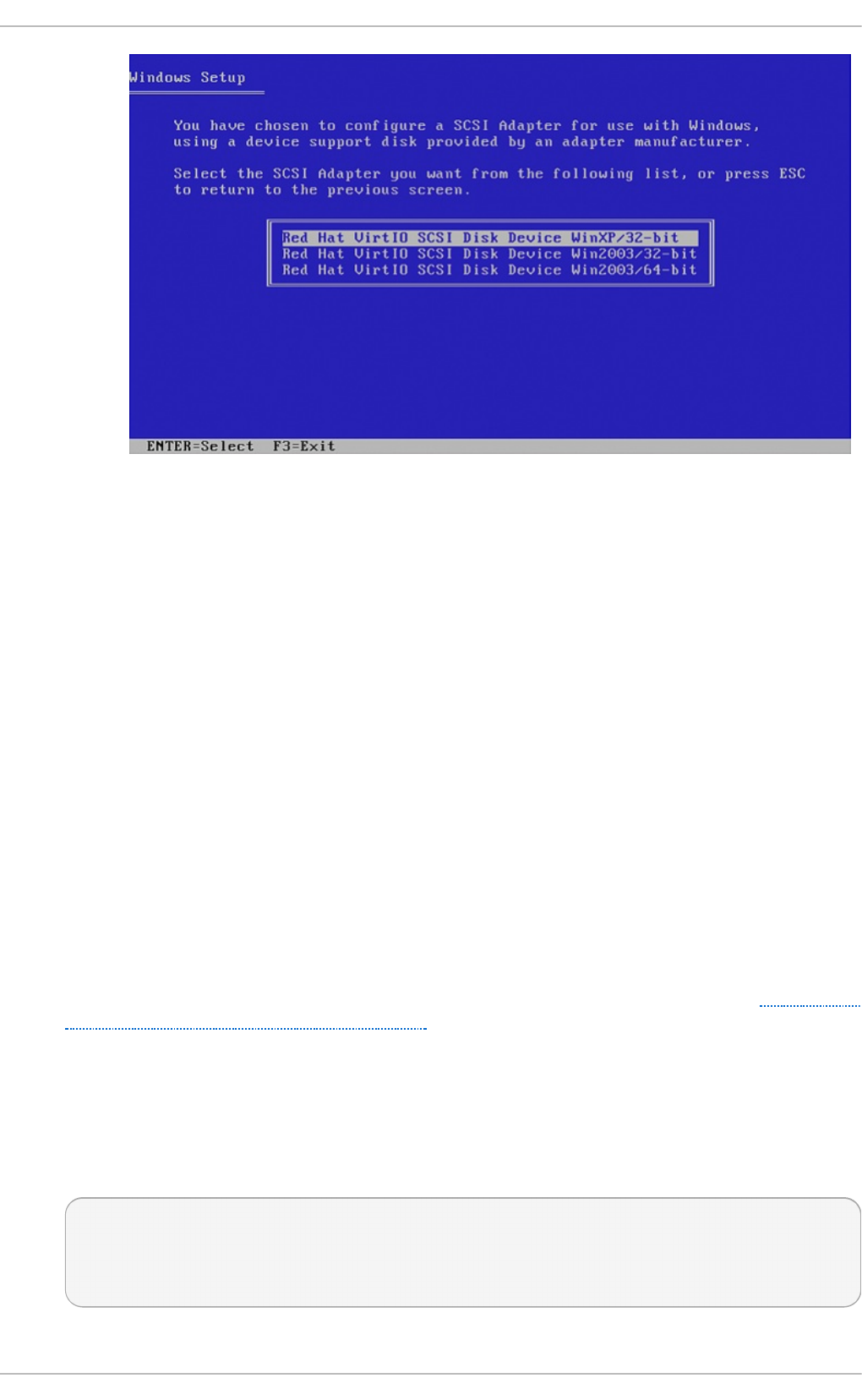

6 .3. Installing d rivers d uring the Wind o ws installatio n

6 .4. Us ing KVM virtio d rivers fo r existing d evices

6 .5. Us ing KVM virtio d rivers fo r new d evic es

Chapt er 7 . Net work configurat ion

7.1. Netwo rk Ad d res s T rans latio n (NAT) with lib virt

7.2. Disab ling vho s t-net

7.3. Enab ling vho s t-net zero -co p y

7.4. Brid g ed netwo rking

Chapt er 8 . O vercommit t ing wit h KVM

8 .1. Intro d uctio n

8 .2. O verco mmitting Memo ry

8 .3. O verco mmitting virtualiz ed CPUs (vCPUs)

Chapt er 9 . KVM guest t iming management

9 .1. Req uired p arameters fo r Red Hat Enterp ris e Linux g ues ts

9 .2. Steal time ac co unting

Chapt er 1 0 . Net work boot in g wit h libvirt

10 .1. Prep aring the b o o t server

10 .2. Bo o ting a g ues t us ing PXE

Chapt er 1 1 . Enhancin g virt ualiz at ion wit h t he Q EMU guest agen t and SPICE agent

11.1. Q EMU g uest ag ent

6

7

7

8

9

9

1 1

11

15

16

2 2

22

22

26

36

38

38

38

4 0

40

41

4 3

44

45

54

6 3

6 4

6 9

6 9

70

71

71

7 6

76

76

77

7 9

8 0

8 2

8 3

8 3

8 4

8 6

8 6

T able of Cont ent s

1

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

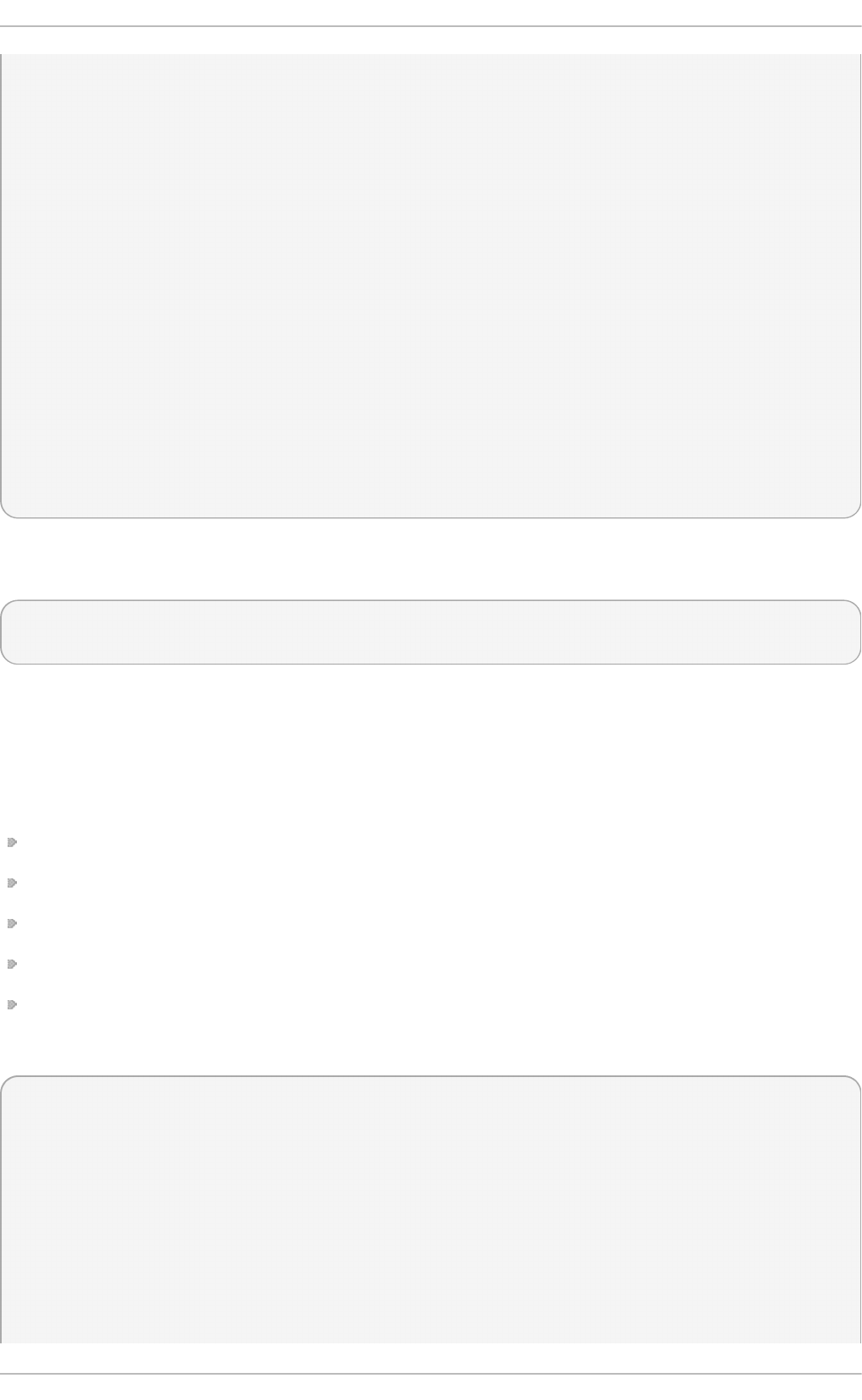

11.2. Us ing the Q EMU g ues t ag ent with lib virt

11.3. SPICE ag ent

Chapt er 1 2 . Nest ed Virt ualizat ion

12.1. O verview

12.2. Setup

12.3. Restrictio ns and Limitatio ns

Part II. Administ rat ion

Chapt er 1 3. Securing t he host physical machin e and improving performance

13.1. Sec urity Dep lo yment Plan

13.2. Client access c o ntro l

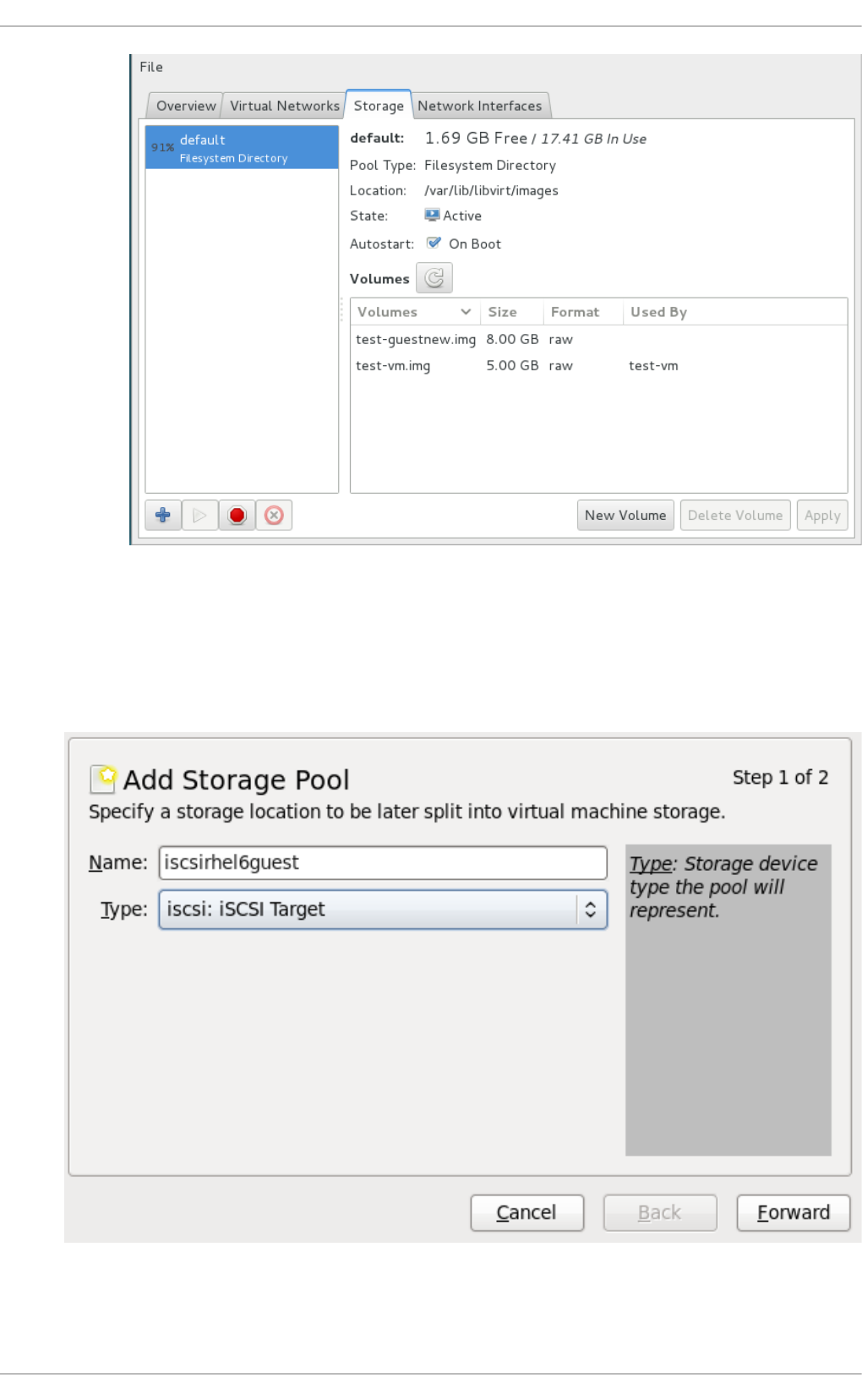

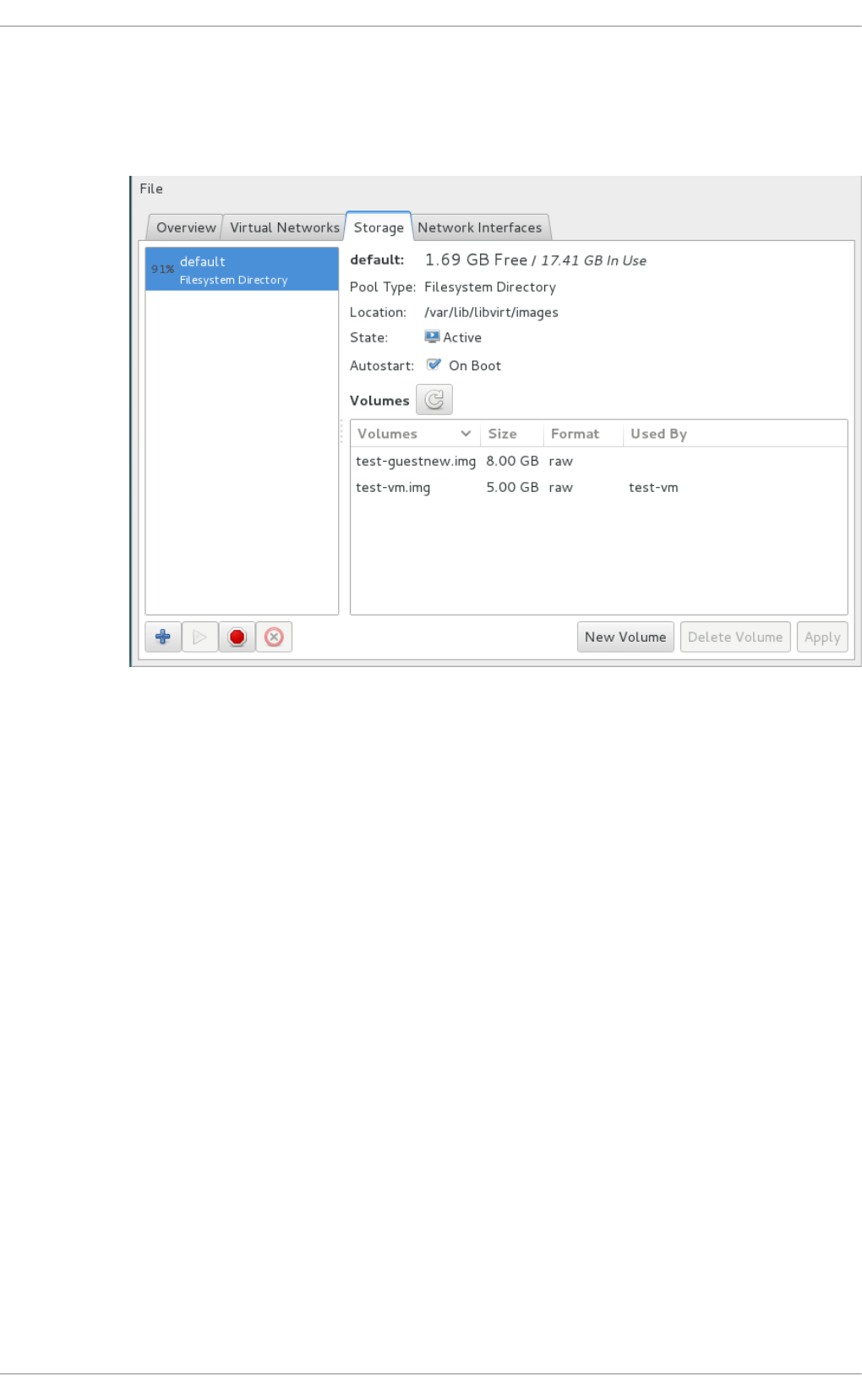

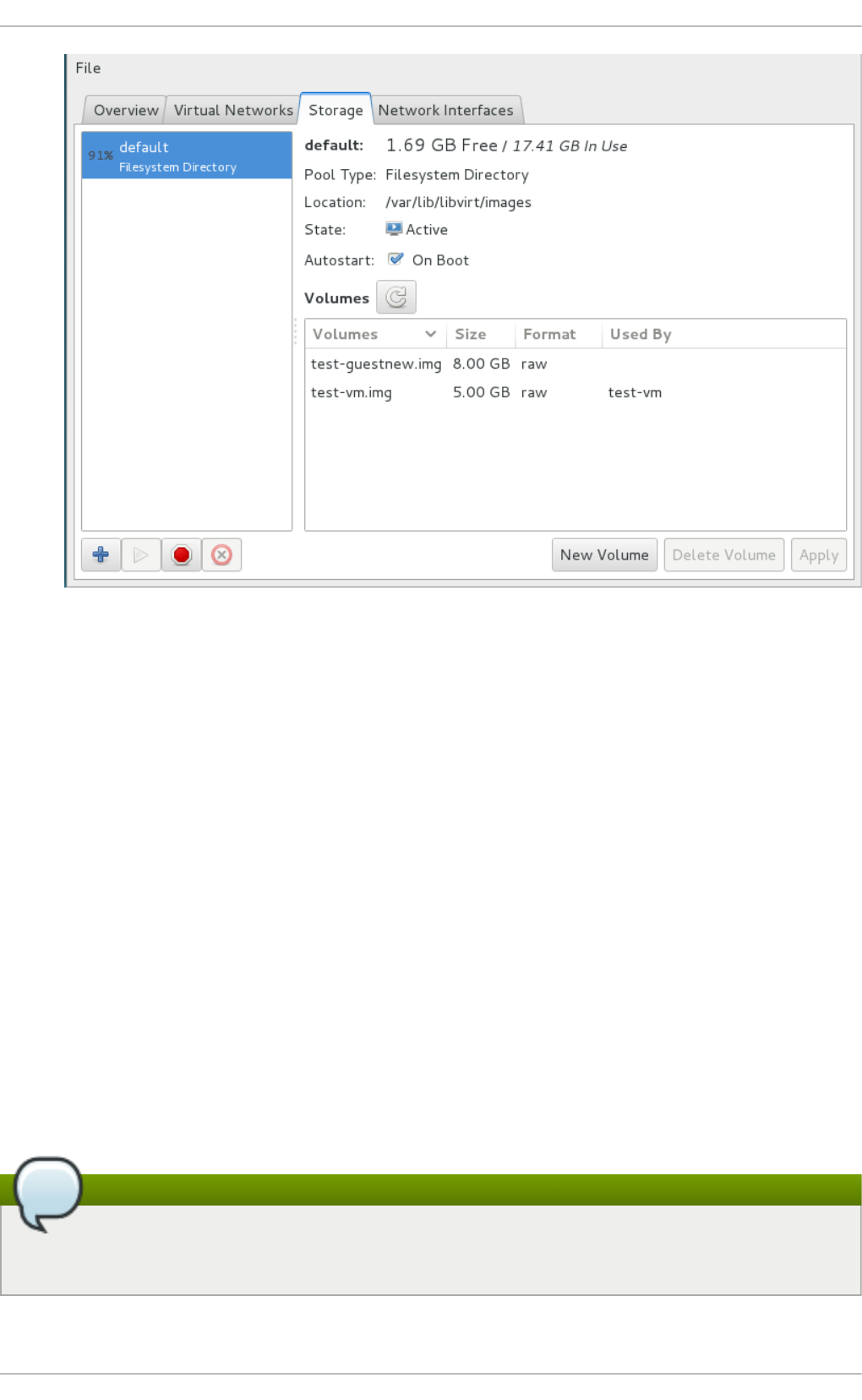

Chapt er 1 4 . St orag e po ols

14.1. Disk-b as ed sto rag e p o o ls

14.2. Partitio n-b ased s to rag e p o o ls

14.3. Directo ry-b ased sto rag e p o o ls

14.4. LVM-b ased sto rag e p o o ls

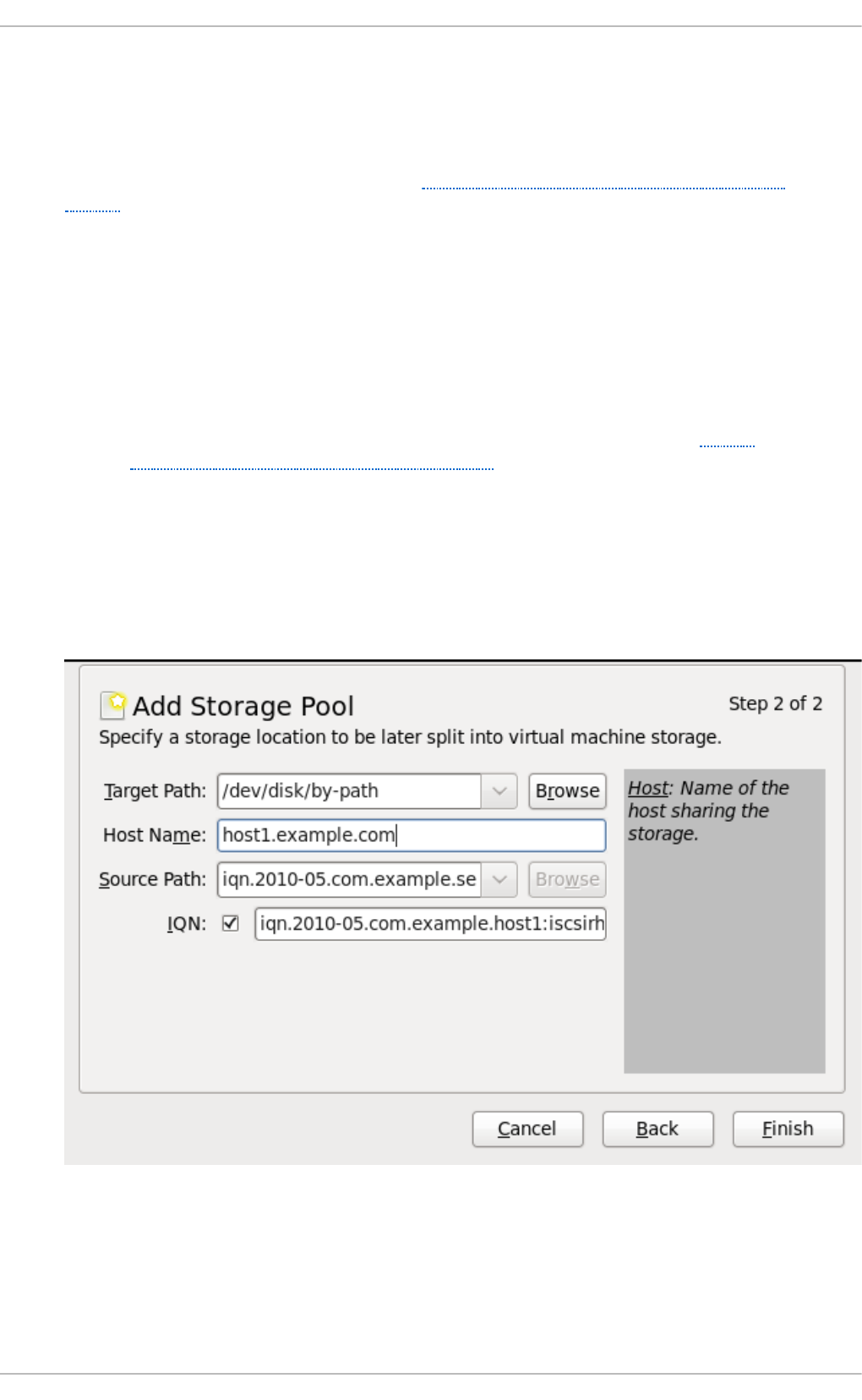

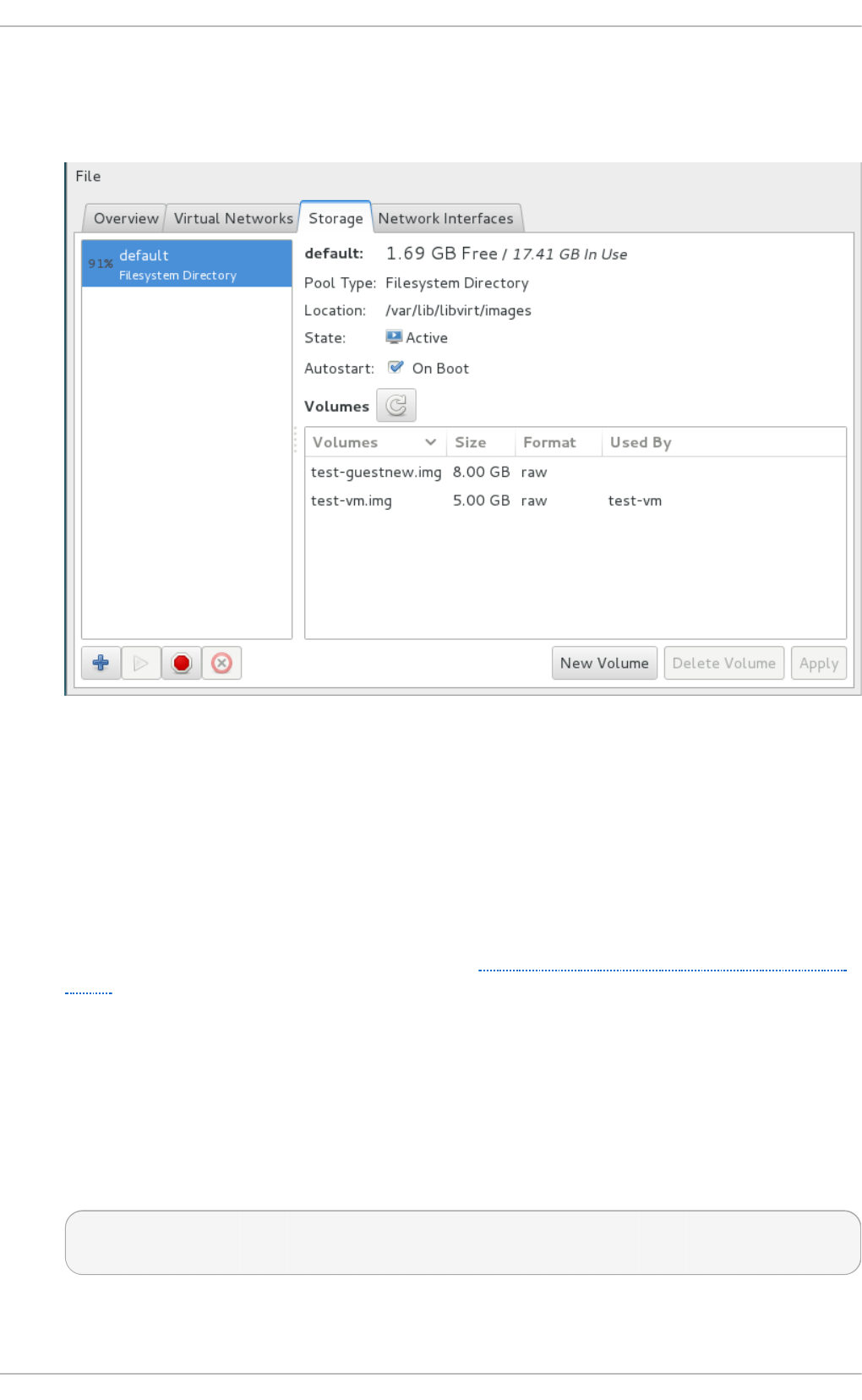

14.5. iSCSI-b ased sto rag e p o o ls

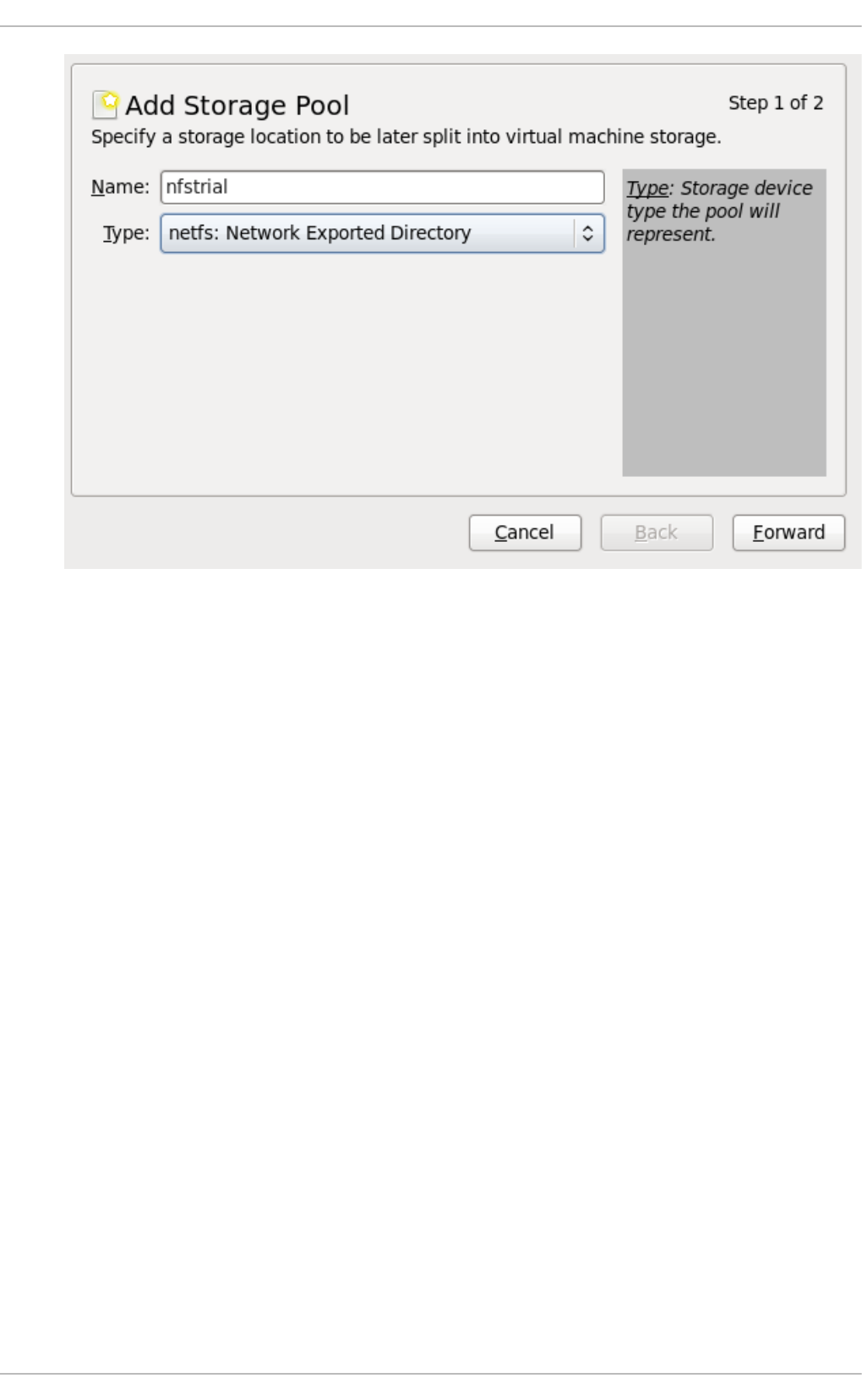

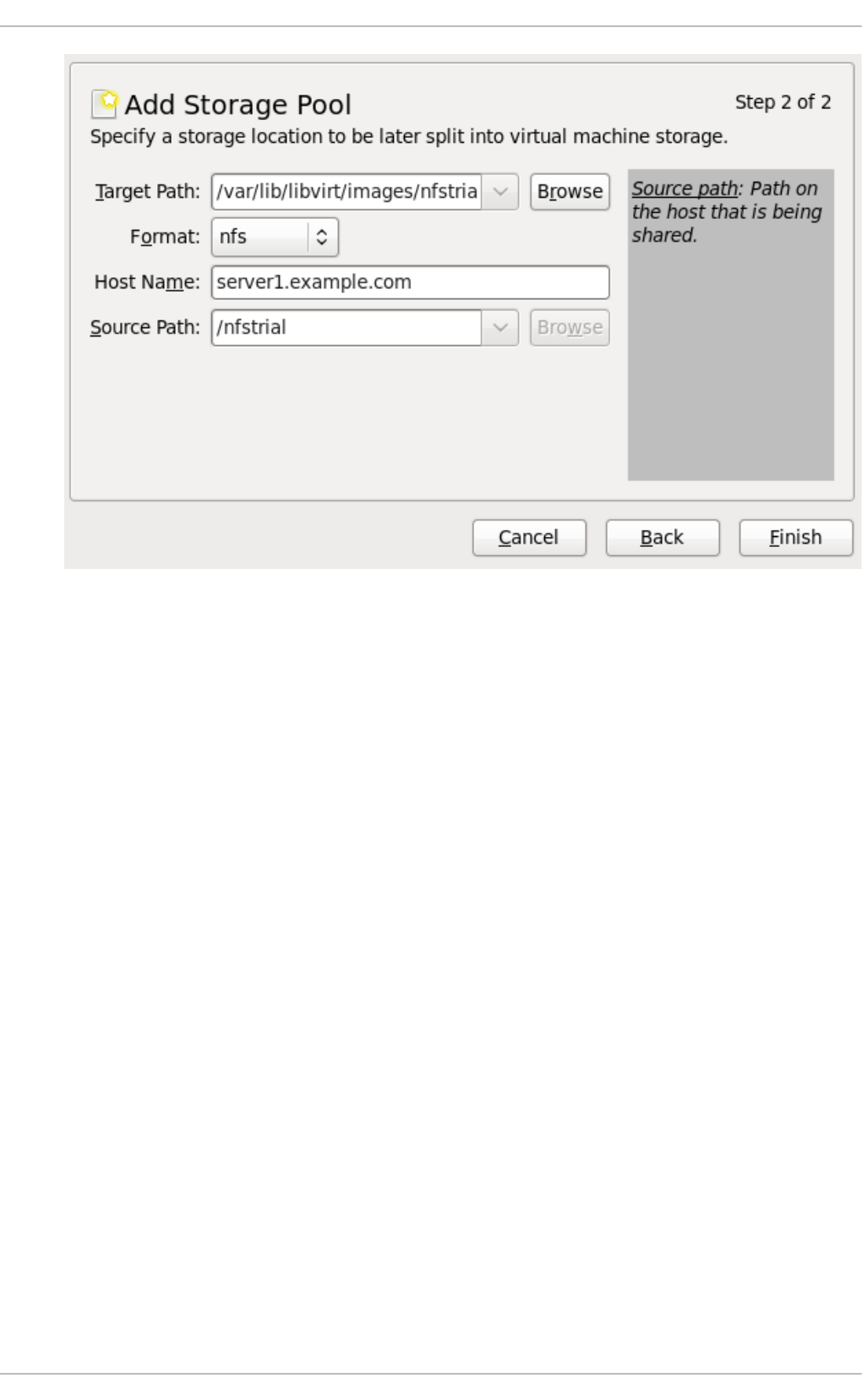

14.6 . NFS-b ased s to rag e p o o ls

14.7. Us ing a NPIV virtual ad ap ter (vHBA) with SCSI d evices

Chapt er 1 5. St orage Volu mes

15.1. Intro d uctio n

15.2. Creating vo lumes

15.3. Clo ning vo lumes

15.4. Deleting and remo ving vo lumes

15.5. Ad d ing sto rag e d evices to g uests

Chapt er 1 6 . Usin g qemu- img

16 .1. Chec king the d isk imag e

16 .2. Co mmitting chang es to an imag e

16 .3. Co nverting an exis ting imag e to ano ther fo rmat

16 .4. Creating and fo rmatting new imag es o r d evices

16 .5. Dis p laying imag e info rmatio n

16 .6 . Re-b asing a b acking file o f an imag e

16 .7. Re-sizing the d isk imag e

16 .8 . Listing , creating , ap p lying , and d eleting a s nap s ho t

16 .9 . Sup p o rted q emu-img fo rmats

Chapt er 1 7 . KVM live migrat io n

17.1. Live mig ratio n req uirements

17.2. Live mig ratio n and Red Hat Enterp rise Linux vers io n co mp atib ility

17.3. Shared sto rag e examp le: NFS fo r a s imp le mig ratio n

17.4. Live KVM mig ratio n with virsh

17.5. Mig rating with virt-manag er

Chapt er 1 8 . G uest virt ual machine device configurat ion

18 .1. PCI d evices

18 .2. USB d evic es

18 .3. Co nfig uring d evic e co ntro llers

18 .4. Setting ad d ress es fo r d evices

18 .5. Rand o m numb er g enerato r d evic e

18 .6 . Ass ig ning G PU d evic es

9 3

9 5

9 9

9 9

9 9

10 1

102

103

10 3

10 4

106

10 8

111

118

124

133

146

150

1 56

156

157

158

159

159

170

170

170

170

171

171

171

172

172

173

174

174

176

177

178

18 3

190

19 0

20 8

20 9

213

214

217

Virt ualizat ion Deployment and Administ rat ion G uide

2

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

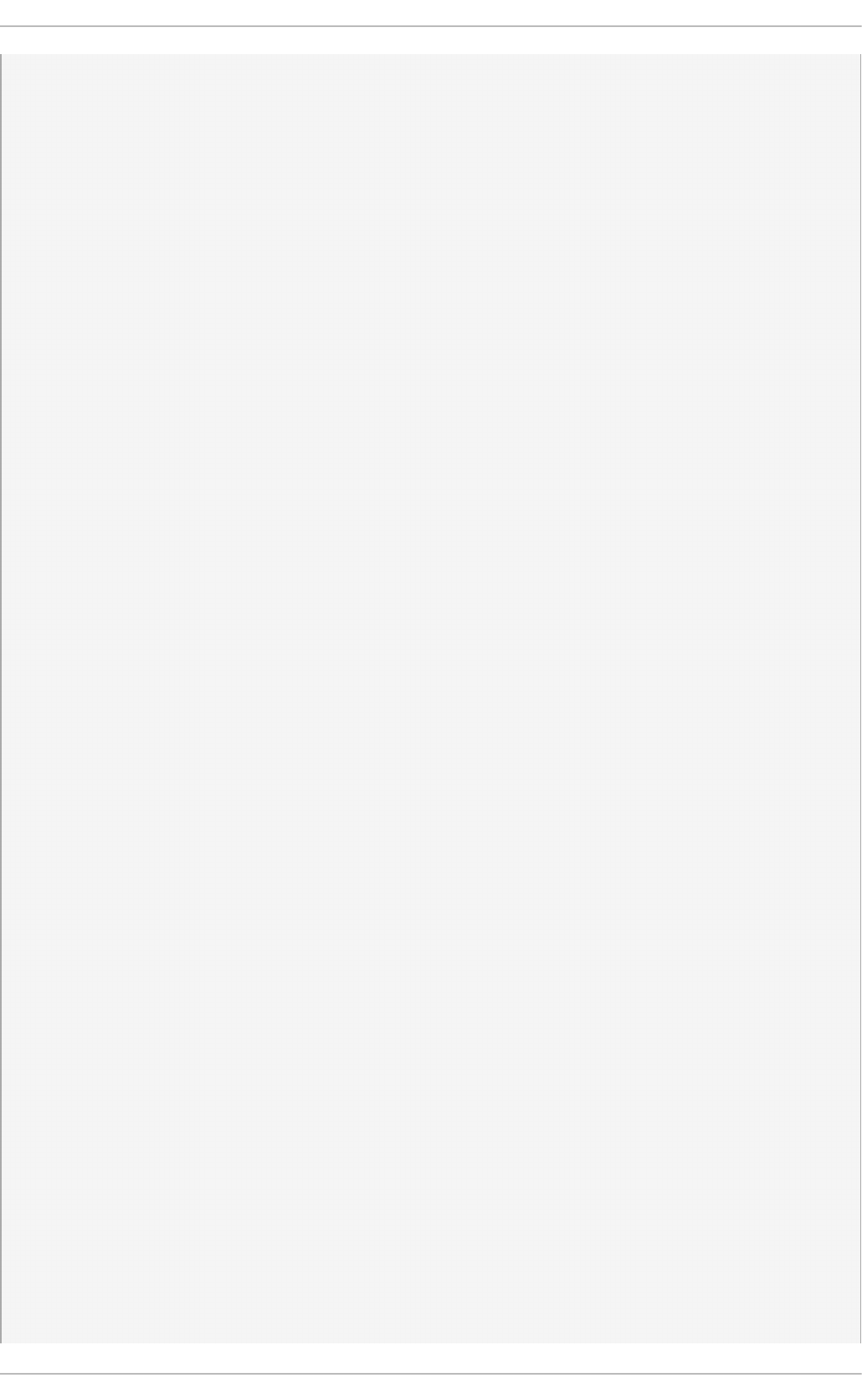

Chapt er 1 9 . SR- IO V

19 .1. Ad vantag es o f SR-IO V

19 .2. Using SR-IO V

19 .3. Tro ub les ho o ting SR-IO V

Chapt er 2 0 . Virt ual Net working

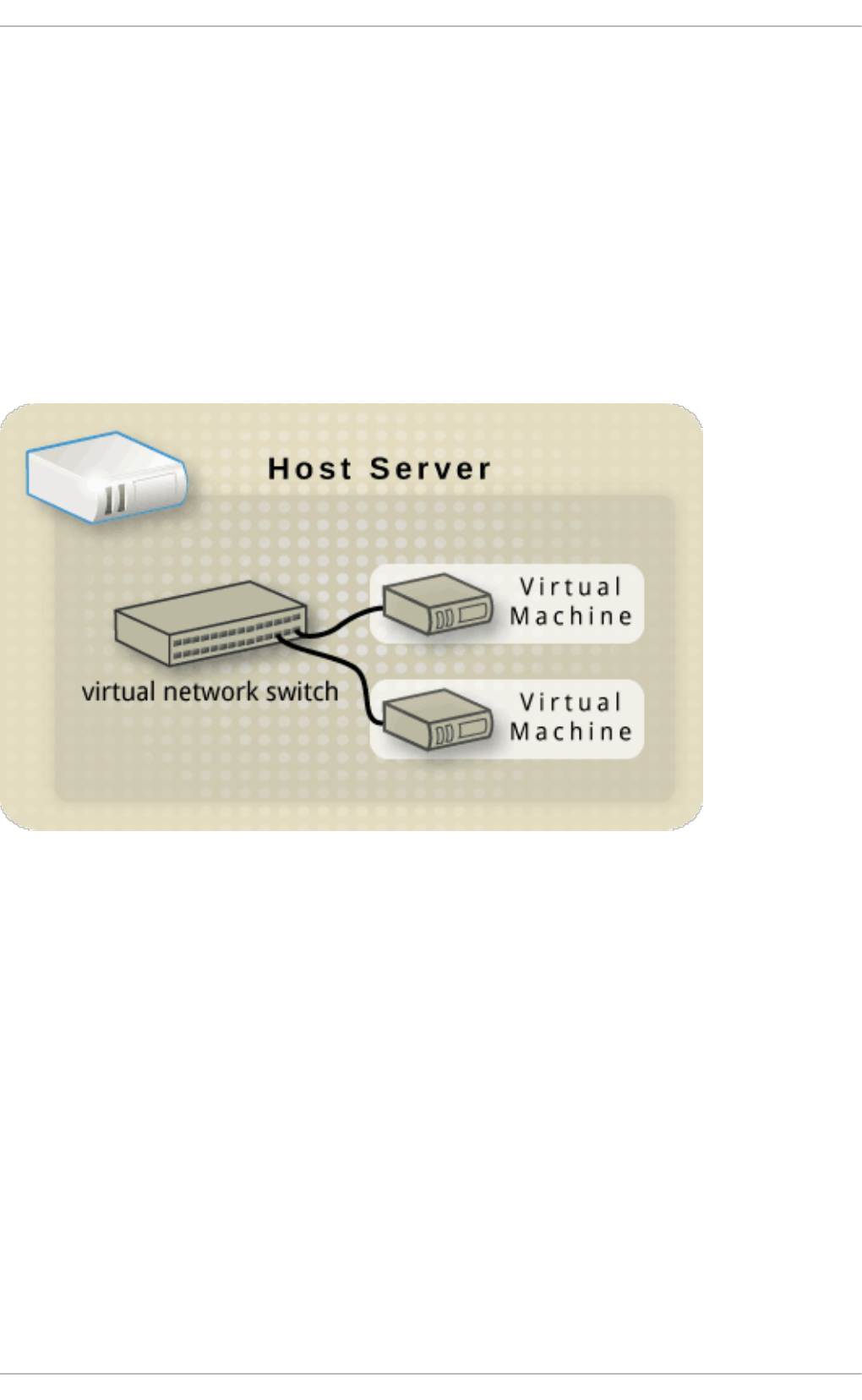

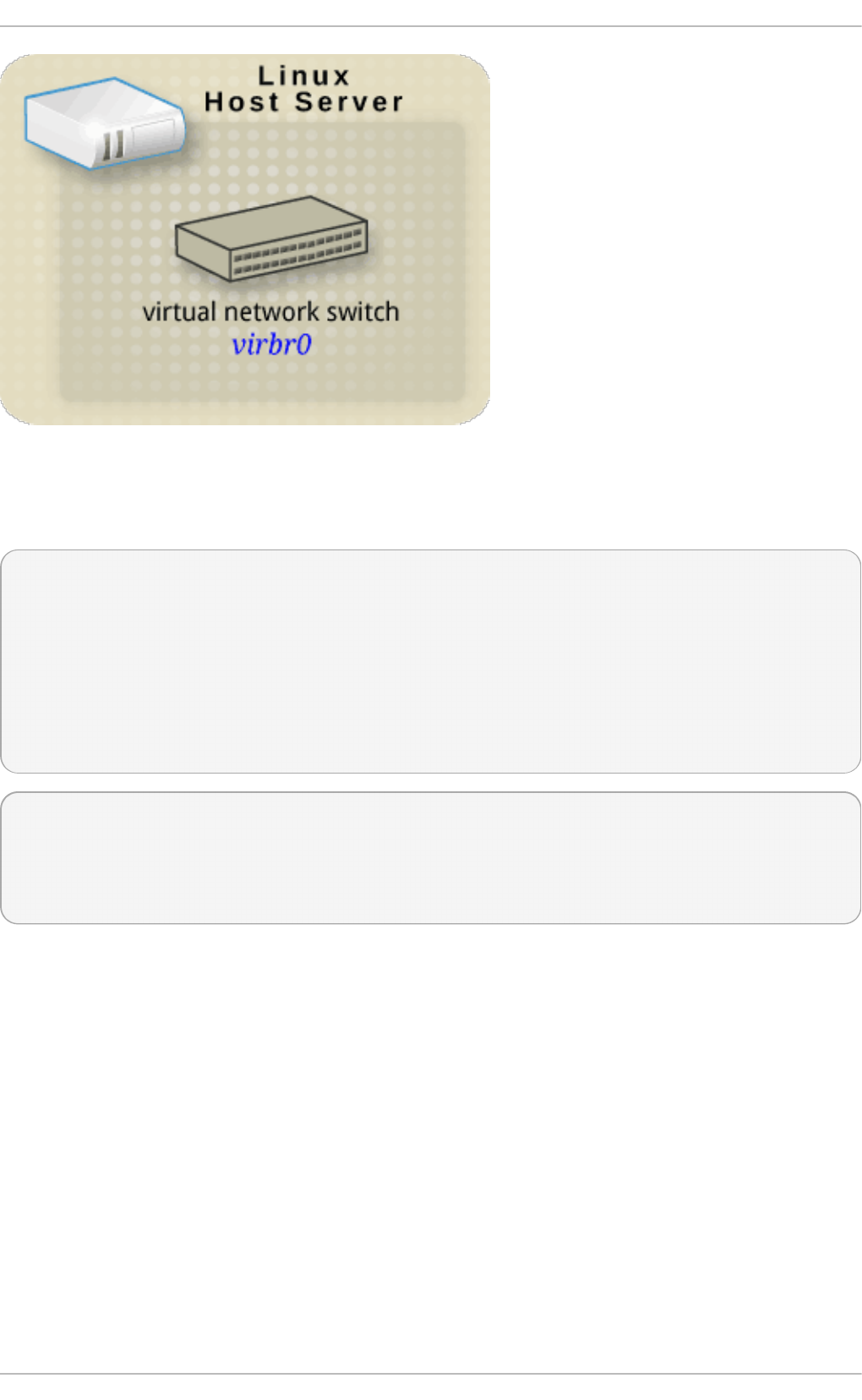

20 .1. Virtual netwo rk switches

20 .2. Brid g e Mo d e

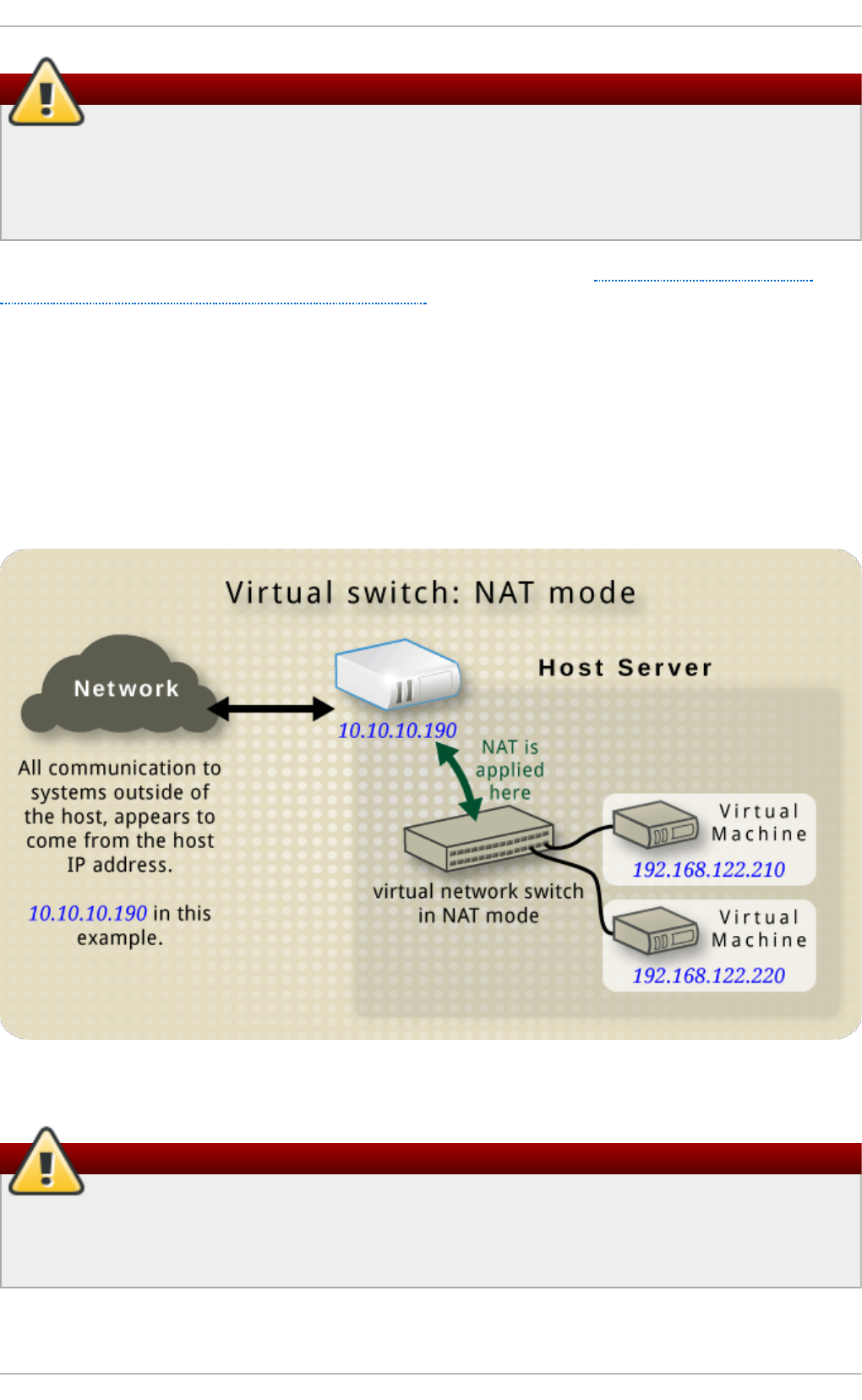

20 .3. Netwo rk Ad d ress Trans latio n

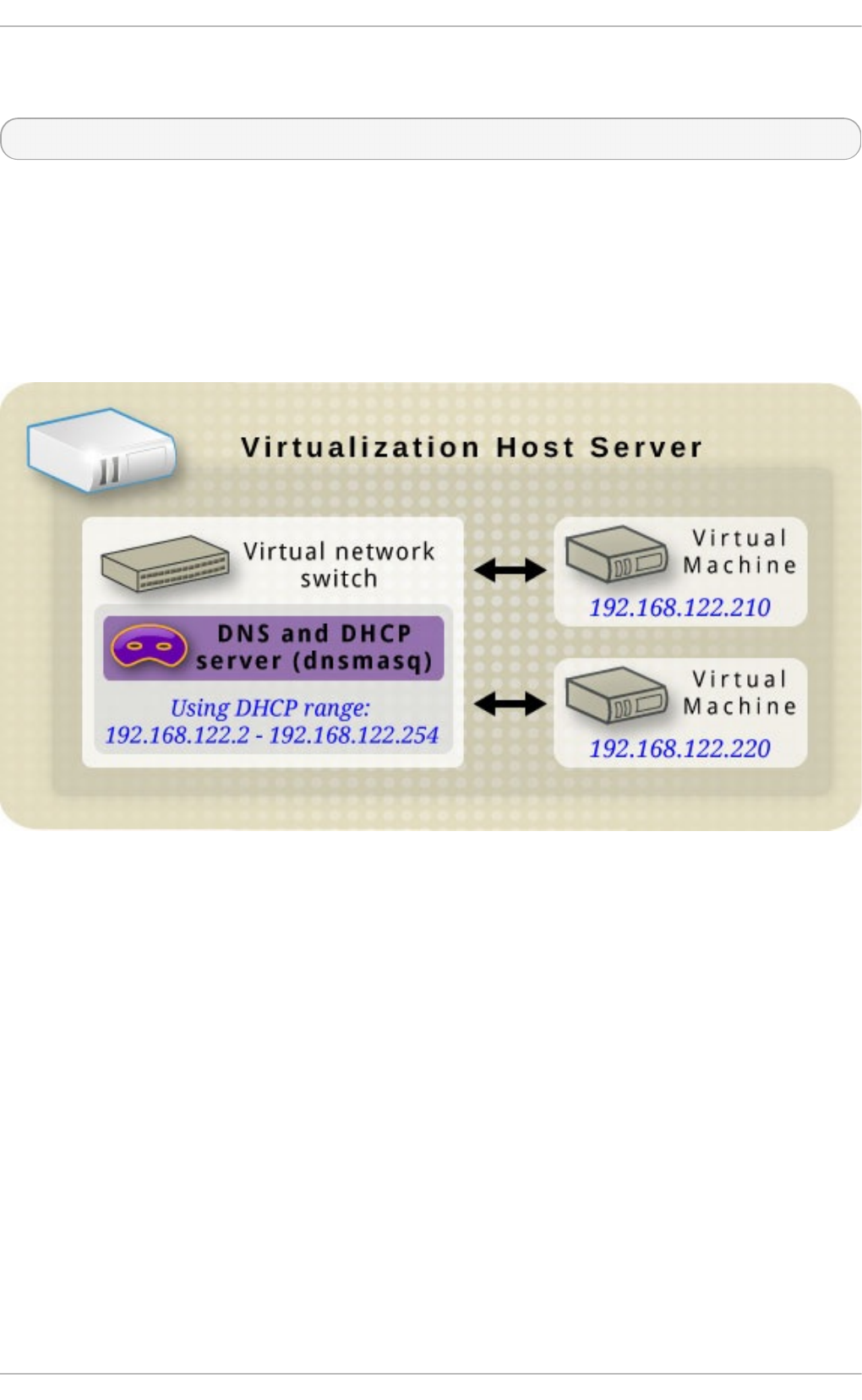

20 .4. DNS and DHCP

20 .5. Ro uted mo d e

20 .6 . Iso lated mo d e

20 .7. The d efault co nfig uratio n

20 .8 . Examp les o f co mmo n scenario s

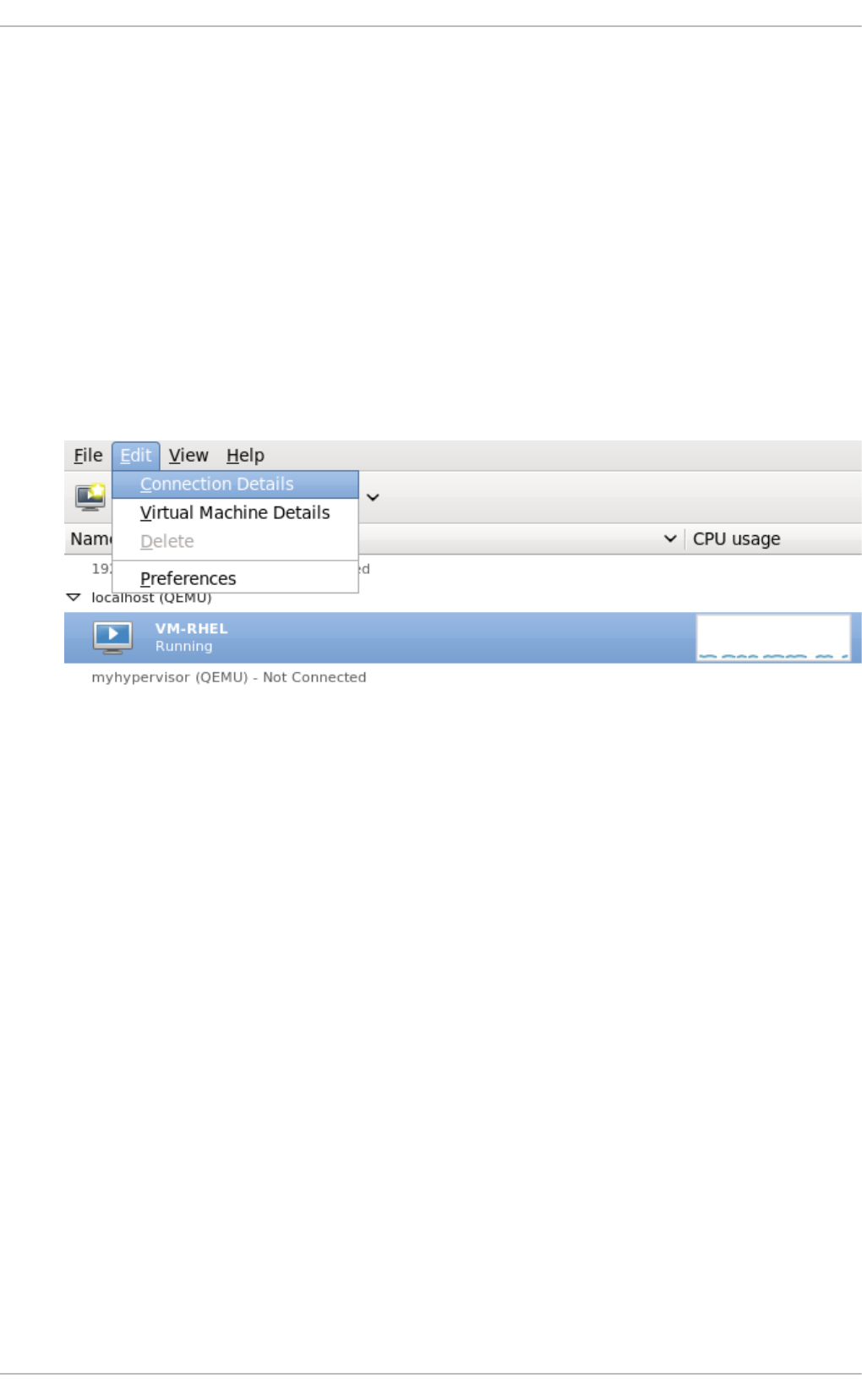

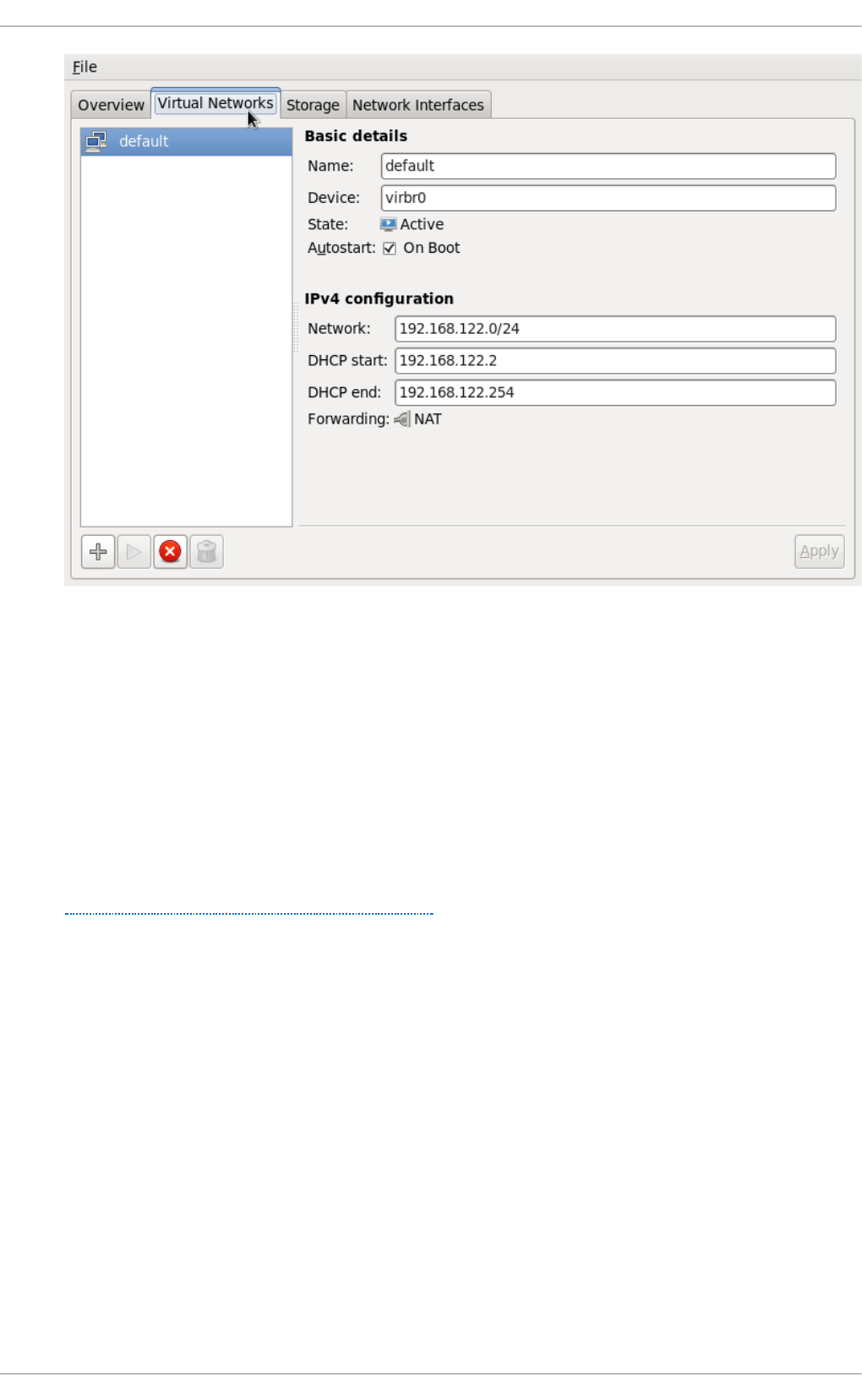

20 .9 . Manag ing a virtual netwo rk

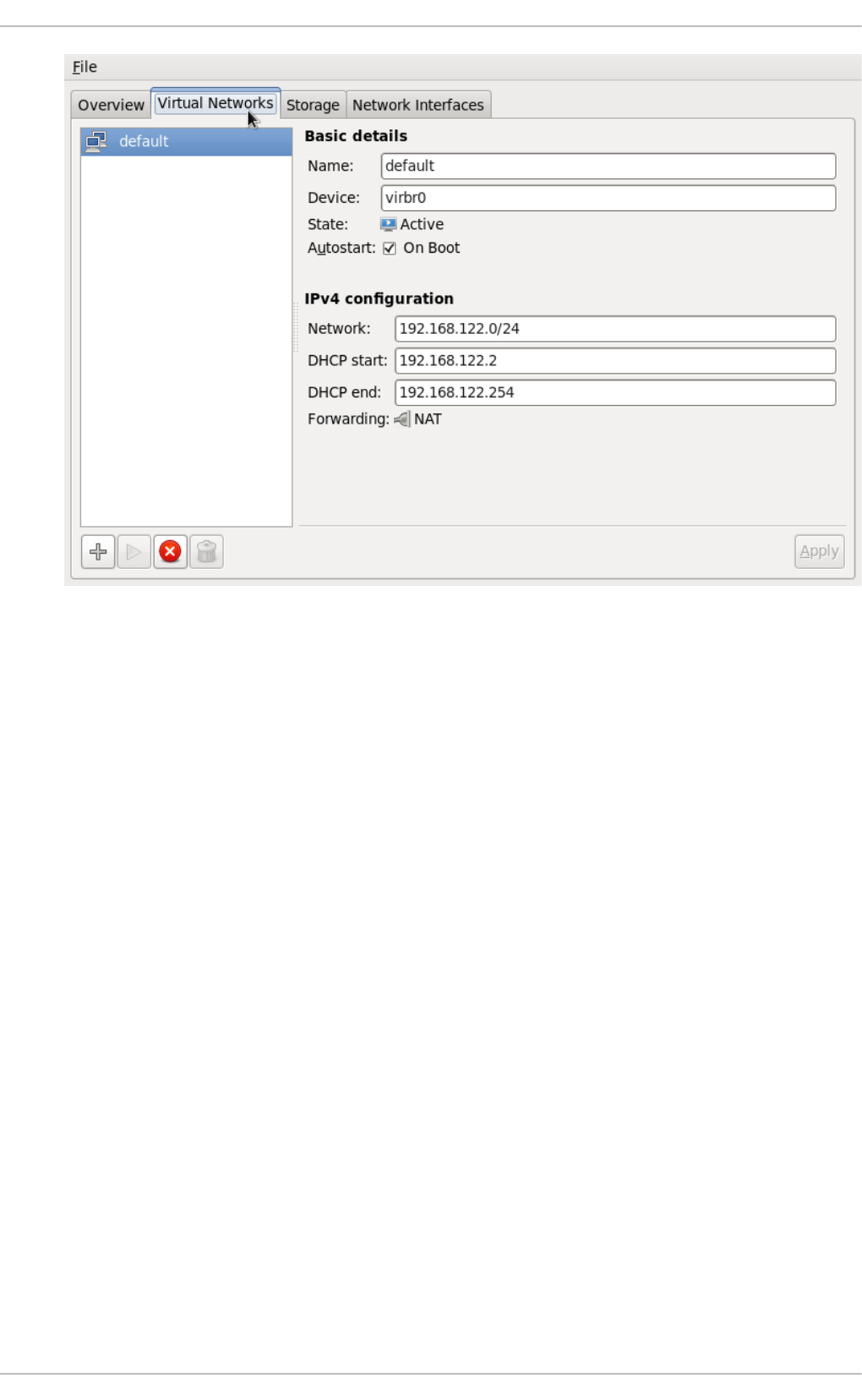

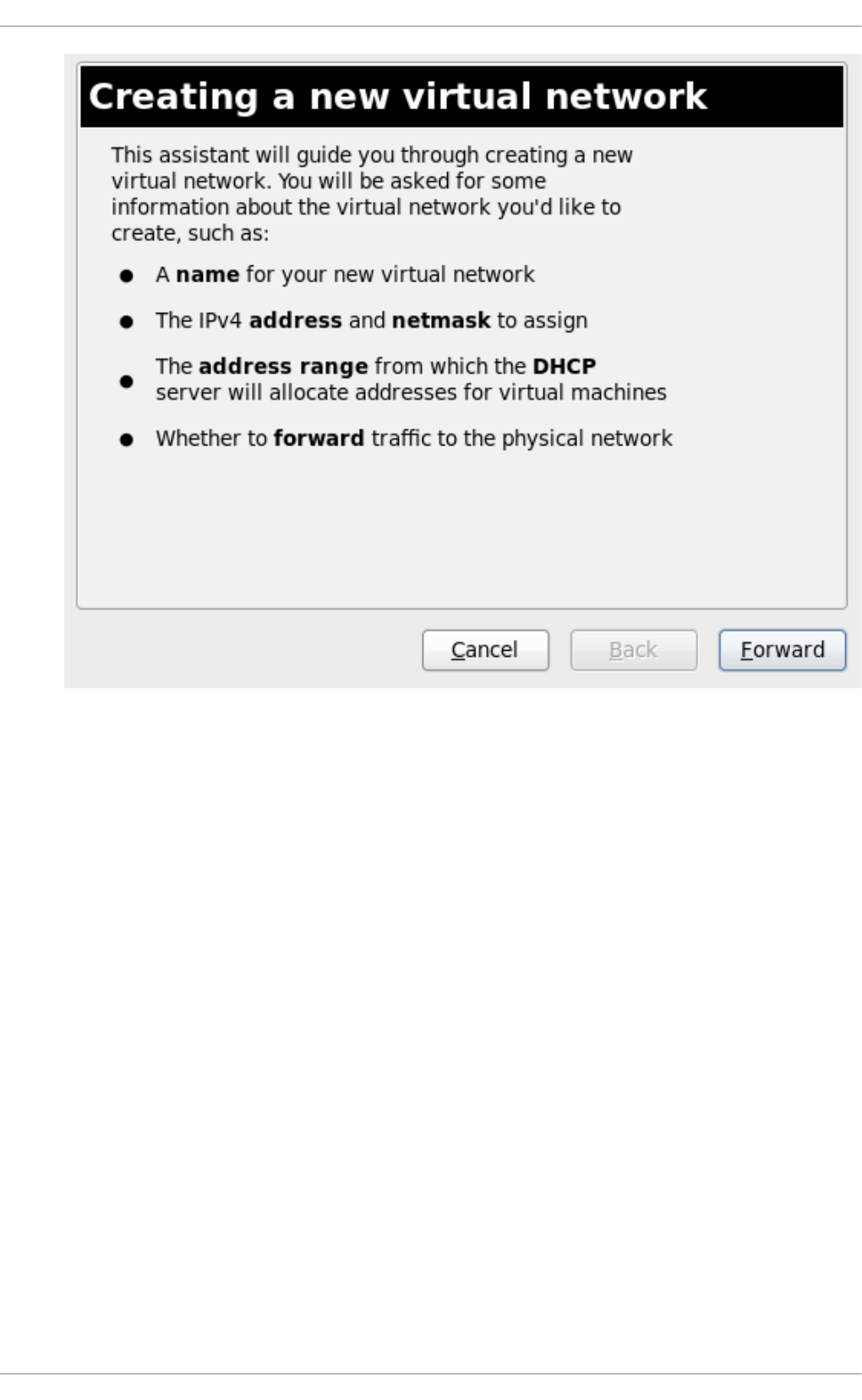

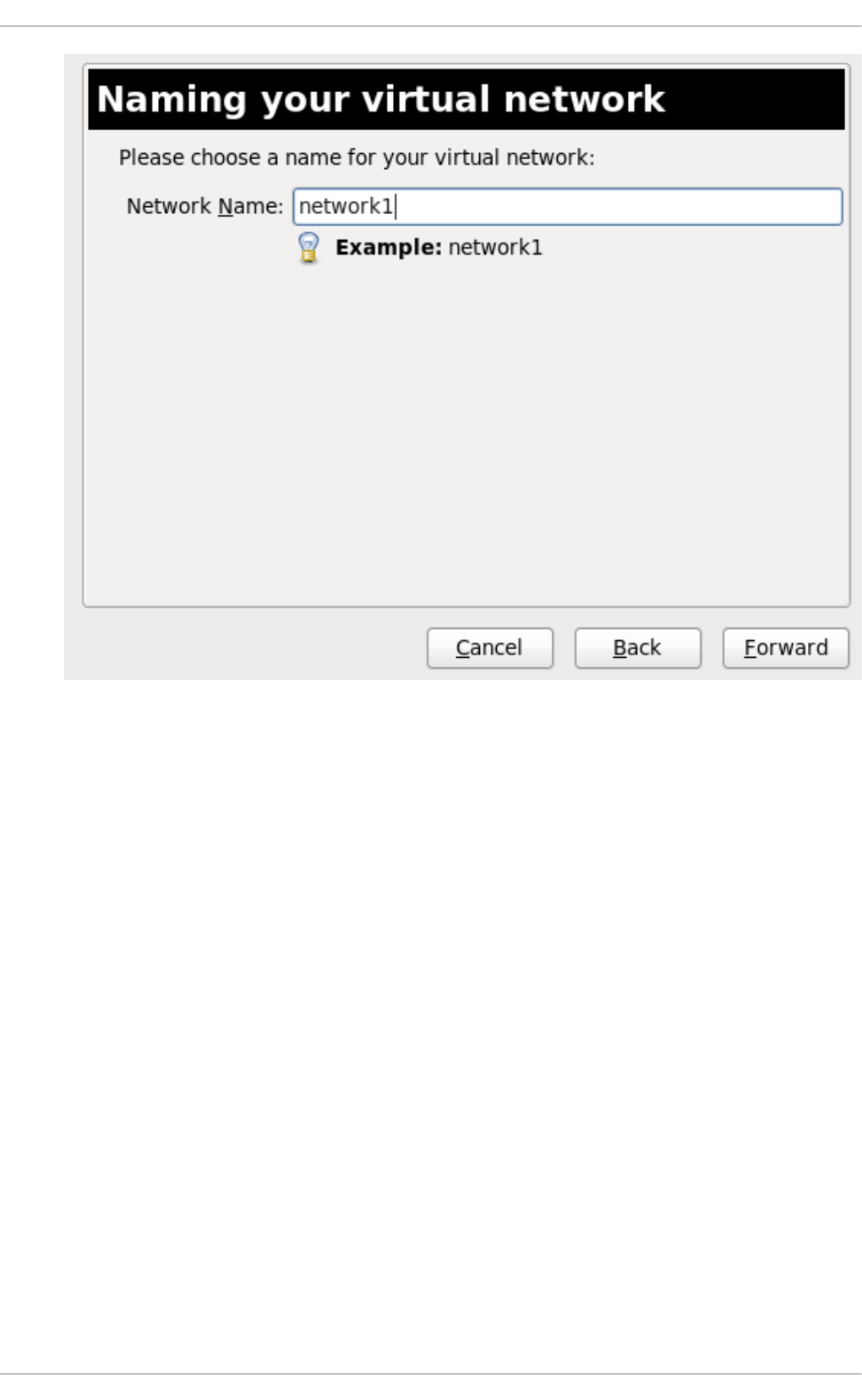

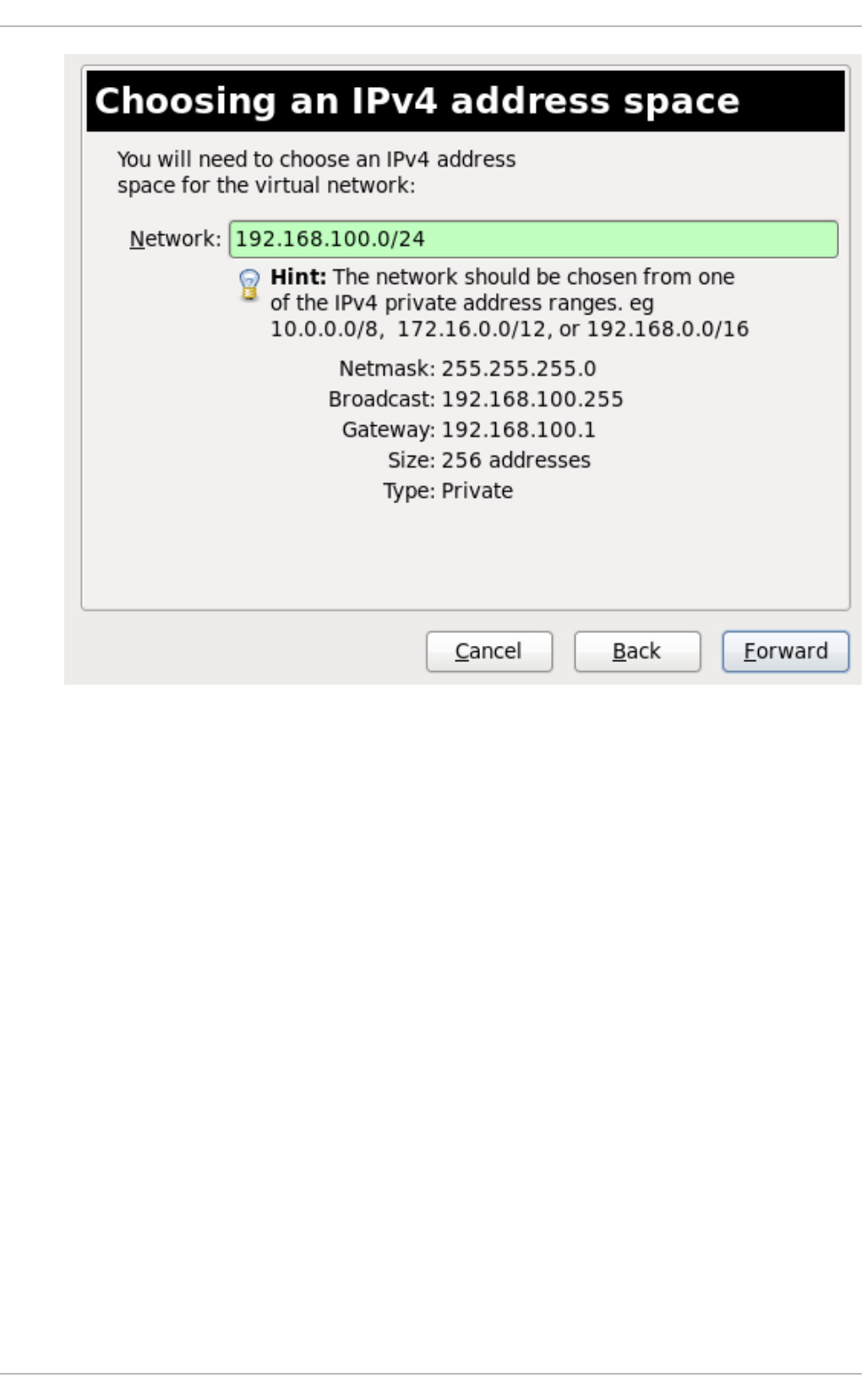

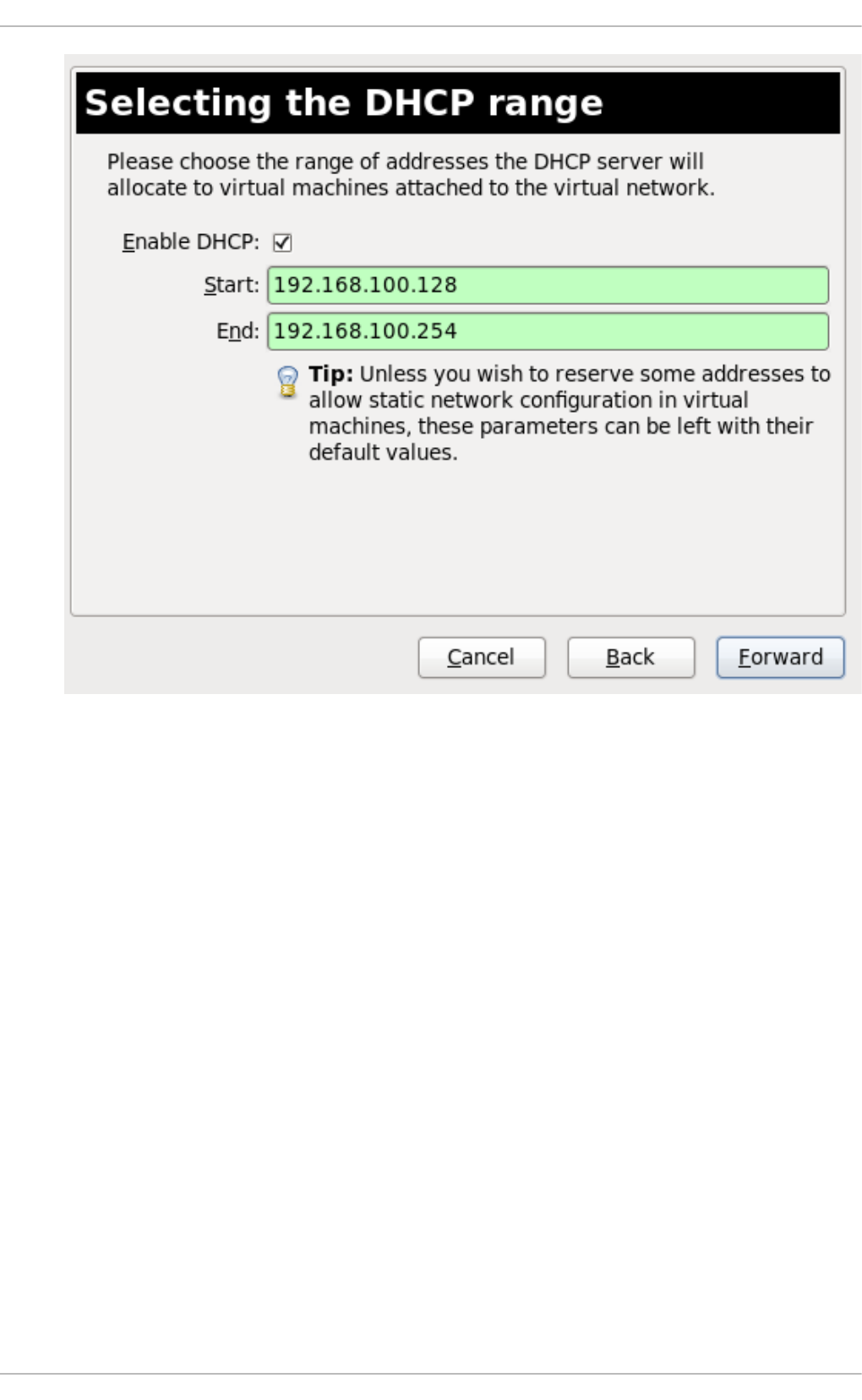

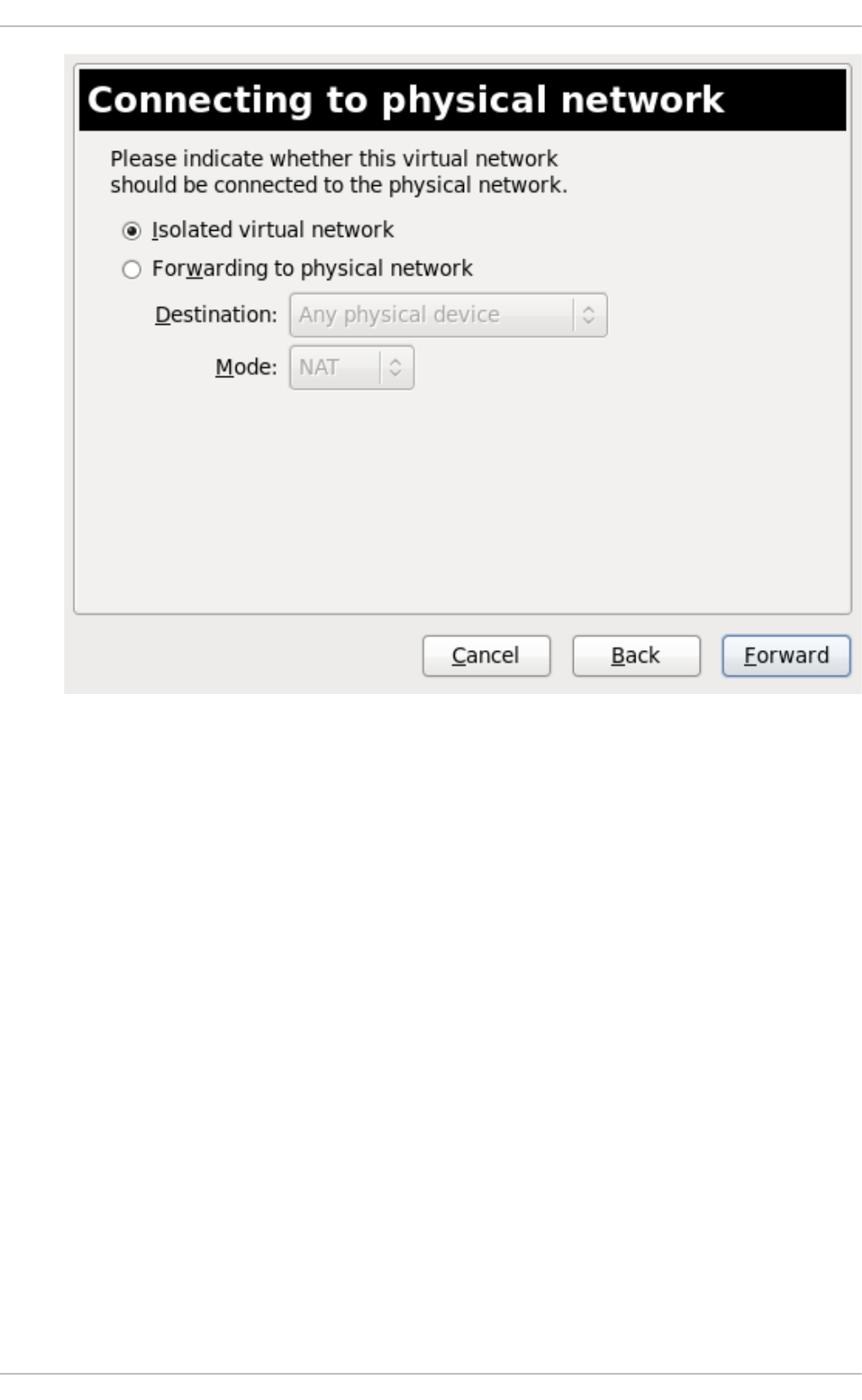

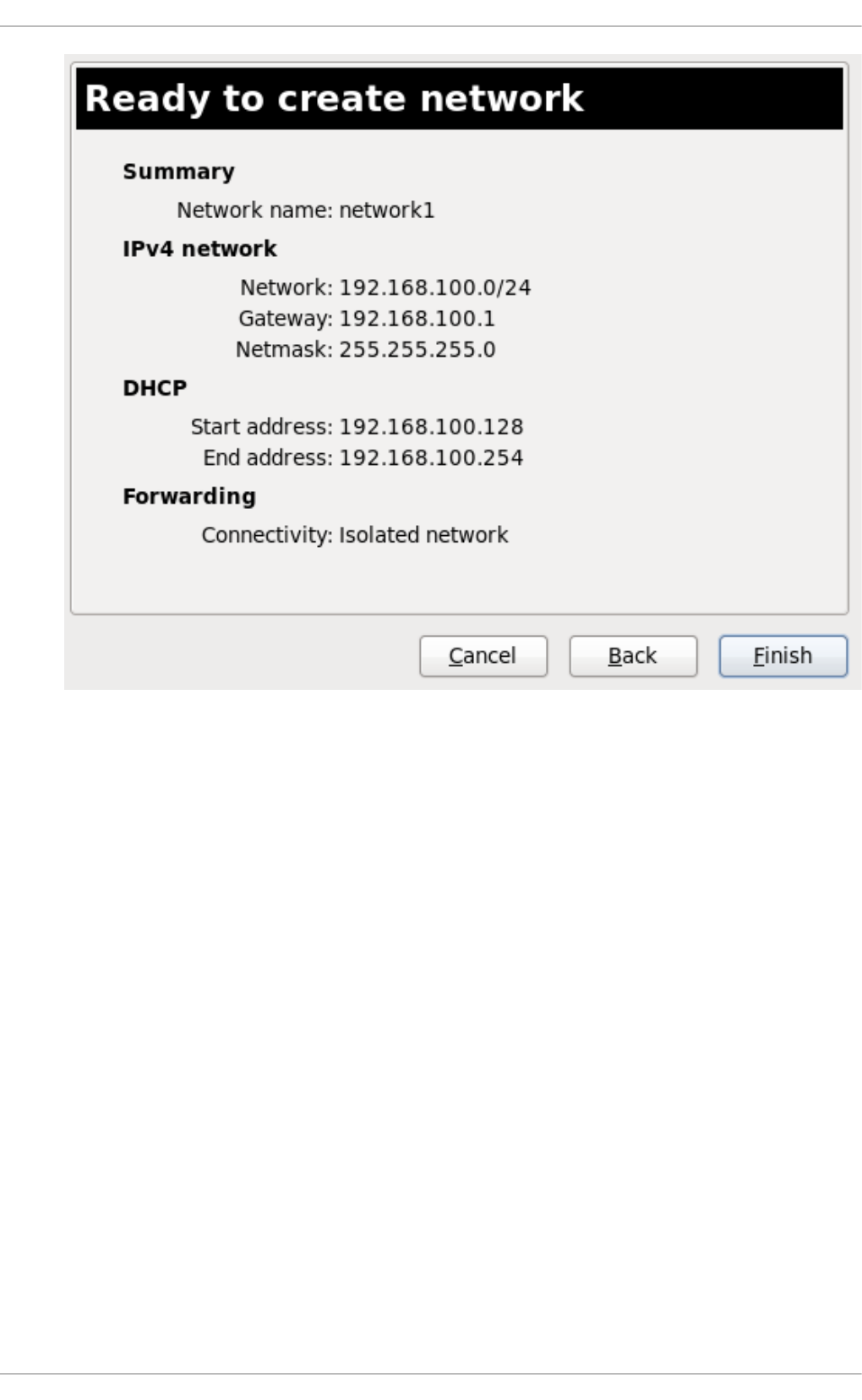

20 .10 . Creating a virtual netwo rk

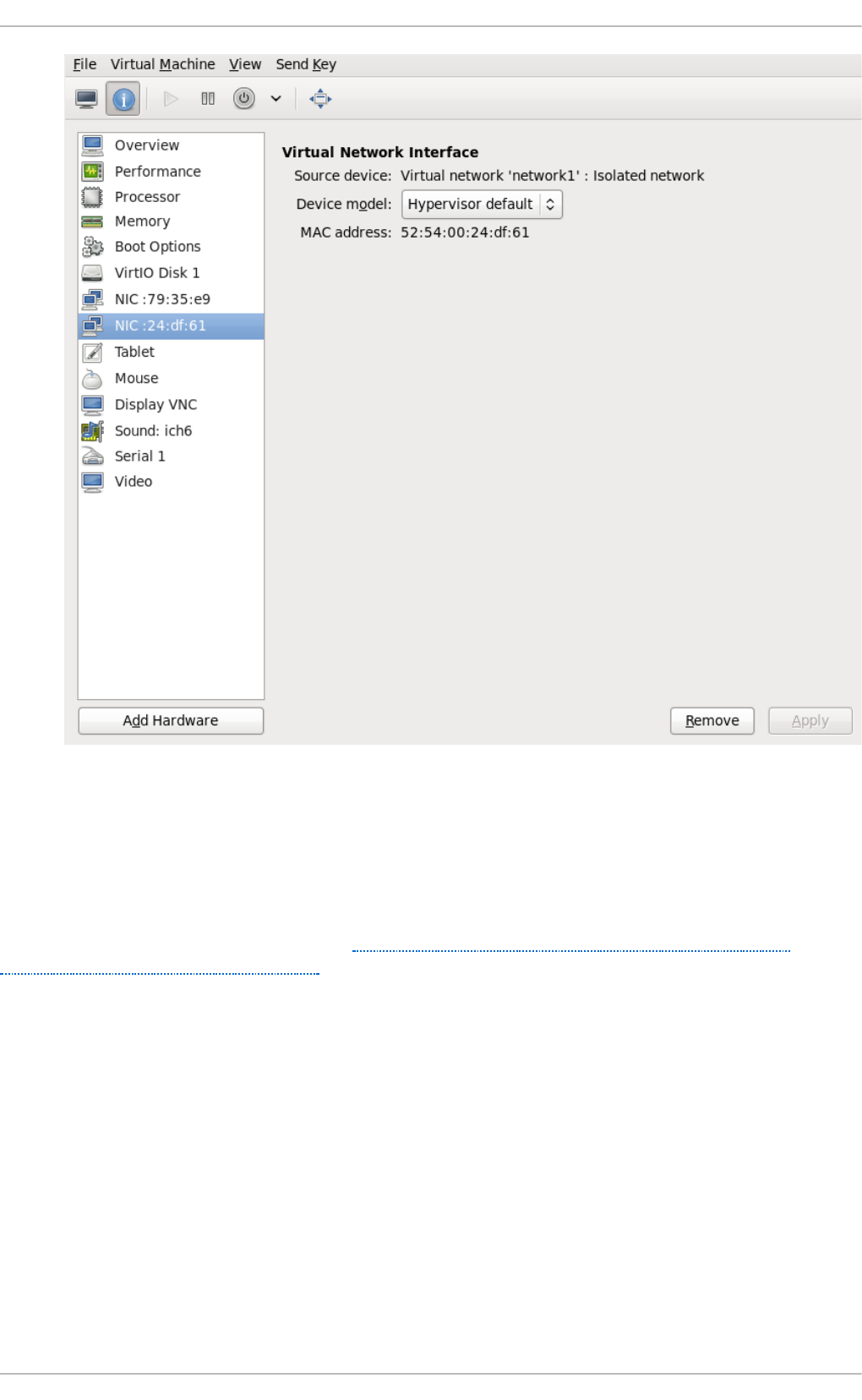

20 .11. Attaching a virtual netwo rk to a g uest

20 .12. Direc tly attaching to p hysical interface

20 .13. Dynamically chang ing a ho st p hysical machine o r a netwo rk b rid g e that is attached to a virtual

NIC

20 .14. Ap p lying netwo rk filtering

20 .15. Creating Tunnels

20 .16 . Setting vLAN tag s

20 .17. Ap p lying Q o S to yo ur virtual netwo rk

Chapt er 2 1 . Remot e management of g uest s

21.1. Remo te manag ement with SSH

21.2. Remo te manag ement o ver TLS and SSL

21.3. Transp o rt mo d es

21.4. Co nfig uring a VNC Server

Chapt er 2 2 . Managing g u est s wit h t he Virt ual Mach ine Manager (virt - manag er)

22.1. Starting virt-manag er

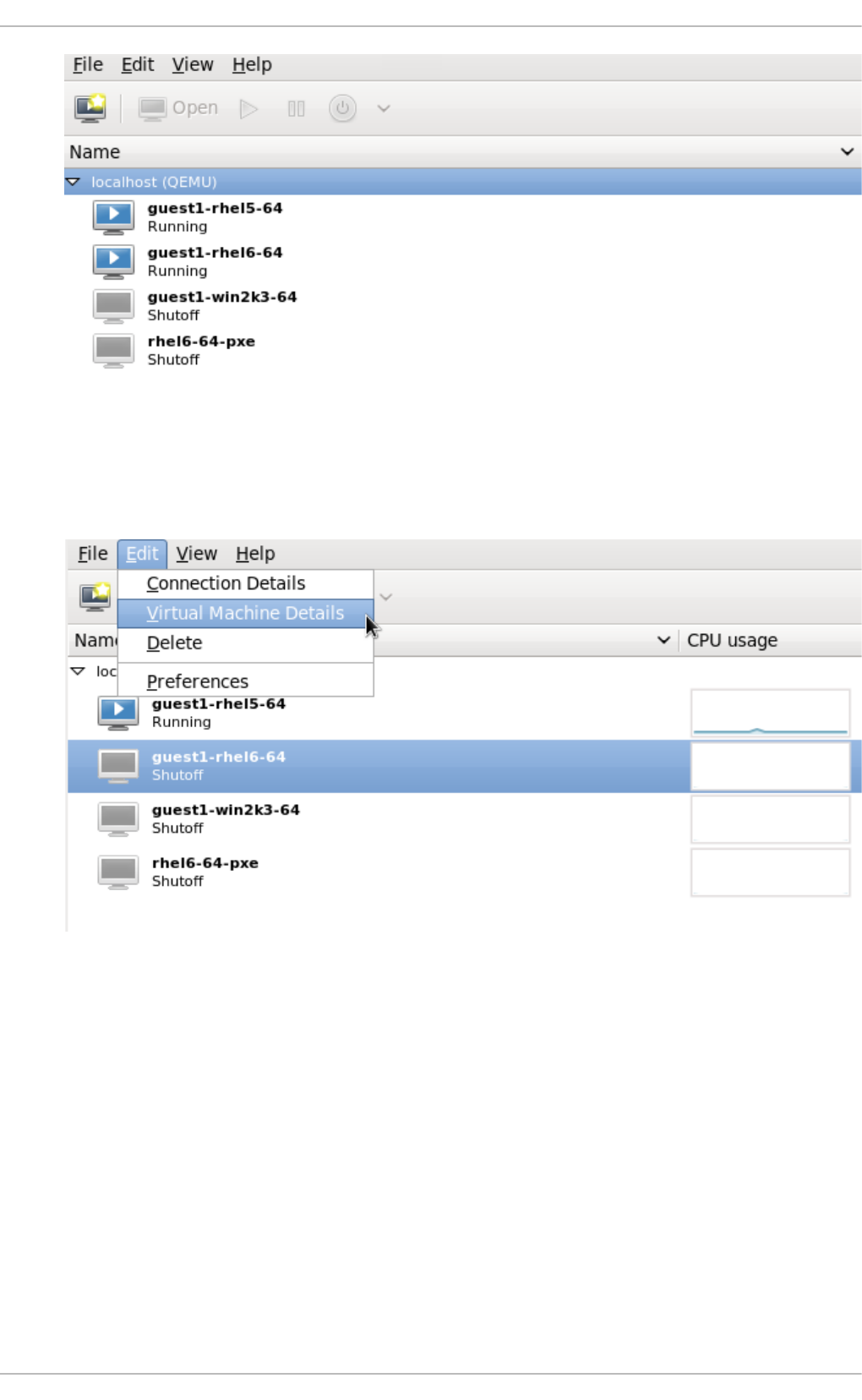

22.2. The Virtual Machine Manag er main wind o w

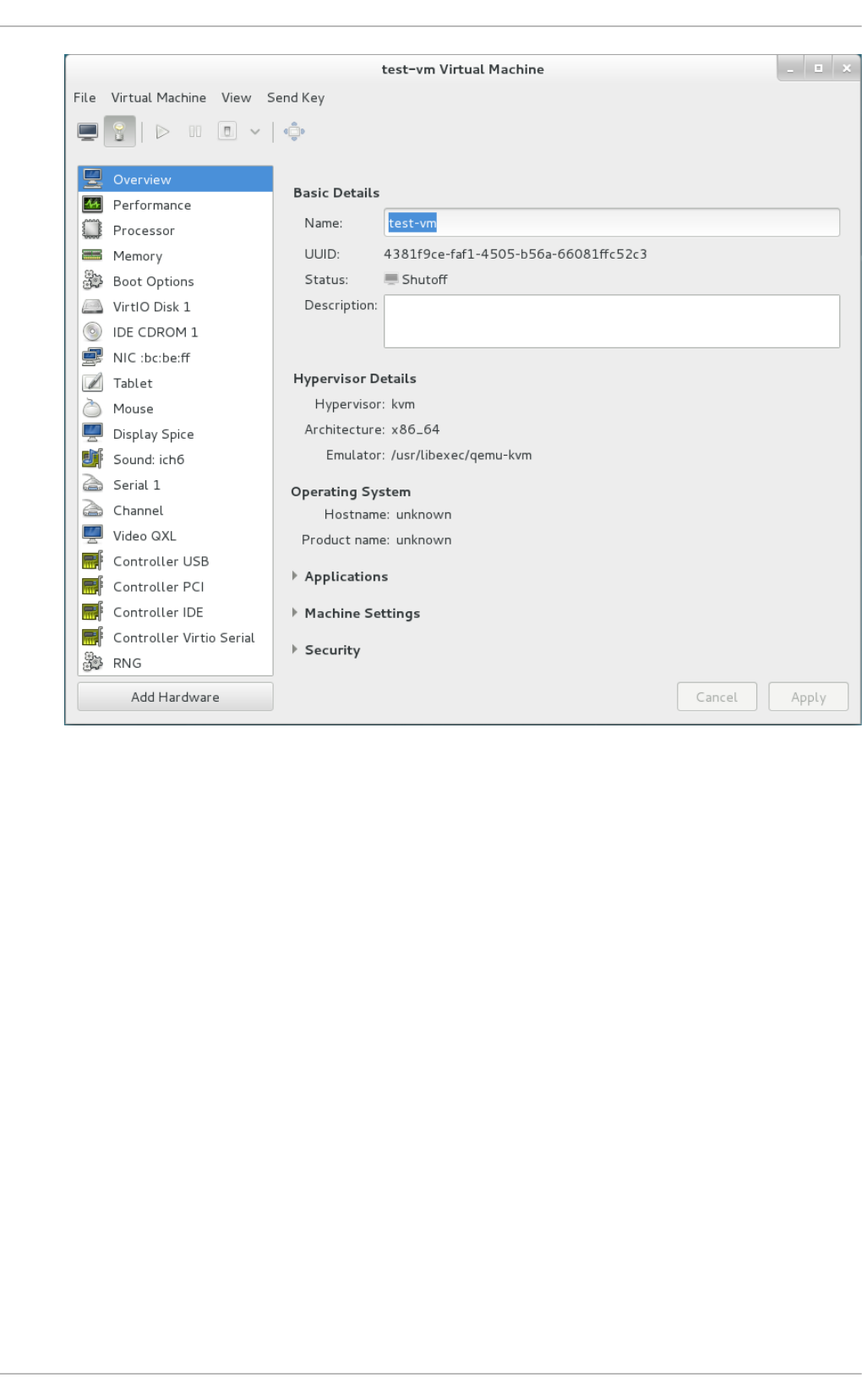

22.3. The virtual hard ware d etails wind o w

22.4. Virtual Mac hine g rap hic al c o ns o le

22.5. Ad d ing a remo te c o nnectio n

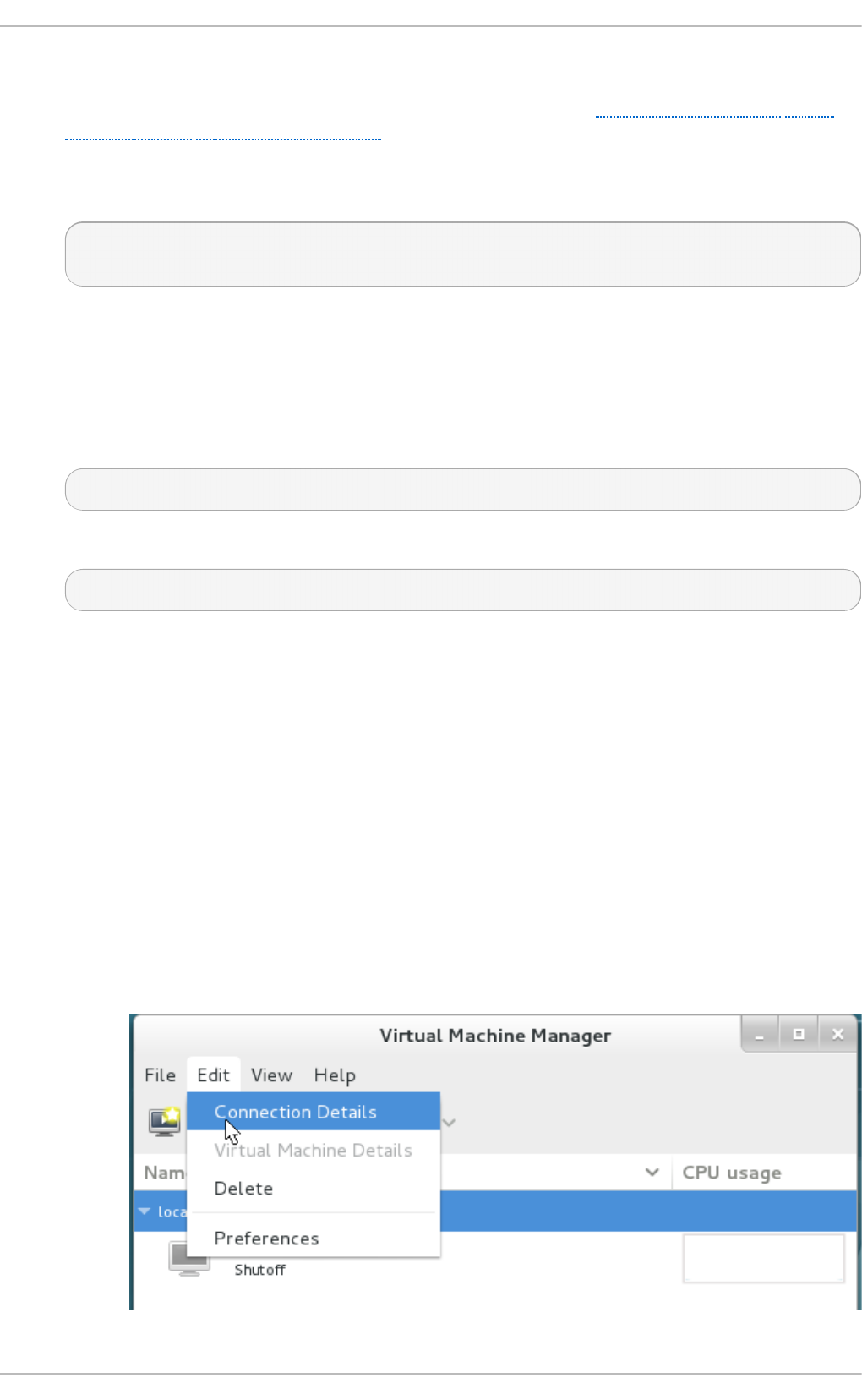

22.6 . Dis p laying g uest d etails

22.7. Perfo rmance mo nito ring

22.8 . Dis p laying CPU usag e fo r g uests

22.9 . Dis p laying CPU usag e fo r ho s ts

22.10 . Disp laying Dis k I/O

22.11. Disp laying Netwo rk I/O

Chapt er 2 3. Managing g u est virt u al machines wit h virsh

23.1. G uest virtual mac hine s tates

23.2. Running the virs h p ro g ram

23.3. Interactive mo d e co mmand s

23.4. Disp laying the virsh versio n

23.5. G etting help

23.6 . Send ing co mmand s with echo

23.7. Co nnecting to the hyp erviso r with virsh co nnect

23.8 . Dis p laying info rmatio n ab o ut g uest virtual machine

23.9 . G uest virtual mac hine b as ic co mmand s

23.10 . Shutting d o wn, reb o o ting , and fo rc ing a s hutd o wn o f a g uest virtual mac hine

221

222

222

228

229

229

230

231

232

232

233

234

235

238

239

246

250

252

253

28 0

28 1

28 2

283

28 3

28 6

28 8

29 2

293

29 3

29 4

29 5

30 2

30 3

30 5

312

314

316

318

321

32 5

325

325

326

326

327

328

328

328

329

333

T able of Cont ent s

3

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

23.10 . Shutting d o wn, reb o o ting , and fo rc ing a s hutd o wn o f a g uest virtual mac hine

23.11. Ed iting a g ues t virtual machine' s co nfig uratio n file

23.12. NUMA no d e manag ement

23.13. Retrieving g ues t virtual mac hine info rmatio n

23.14. Sto rag e p o o l c o mmand s

23.15. Sto rag e Vo lume Co mmand s

23.16 . Disp laying p er-g uest virtual mac hine info rmatio n

23.17. Manag ing virtual netwo rks

23.18 . Interfac e Co mmand s

23.19 . Manag ing snap sho ts

23.20 . G uest virtual mac hine CPU mo d el c o nfig uratio n

23.21. Co nfig uring the g uest virtual mac hine CPU mo d el

23.22. Manag ing reso urc es fo r g ues t virtual machines

23.23. Setting s ched ule p arameters

23.24. Disk I/O thro ttling

23.25. Disp lay o r set b lo ck I/O p arameters

23.26 . Co nfig uring memo ry Tuning

Chapt er 2 4 . G uest virt ual machine disk access wit h offline t ools

24.1. Intro d uc tio n

24.2. Termino lo g y

24.3. Installatio n

24.4. The g ues tfis h shell

24.5. O ther co mmand s

24.6 . virt-res cue: The resc ue shell

24.7. virt-d f: Mo nito ring d isk usag e

24.8 . virt-res ize: res izing g uest virtual mac hines o ffline

24.9 . virt-insp ecto r: ins p ec ting g uest virtual mac hines

24.10 . virt-win-reg : Read ing and ed iting the Wind o ws Reg istry

24.11. Using the API fro m Pro g ramming Lang uag es

24.12. virt-sysp rep : res etting virtual mac hine setting s

Chapt er 2 5. G raphic User Int erface t ools for guest virt ual machine manag ement

25.1. Using virt-viewer co mmand line

25.2. remo te-viewer

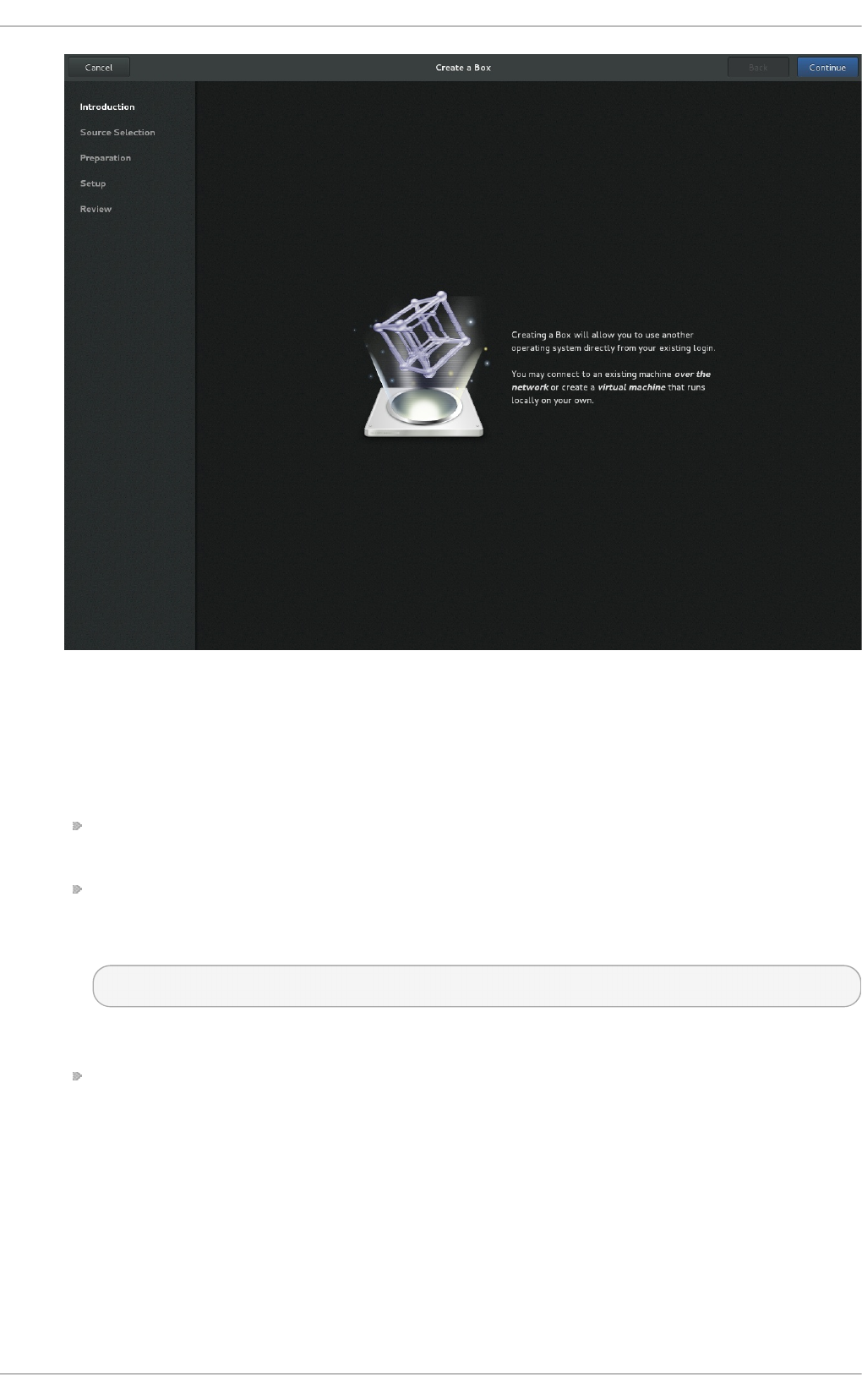

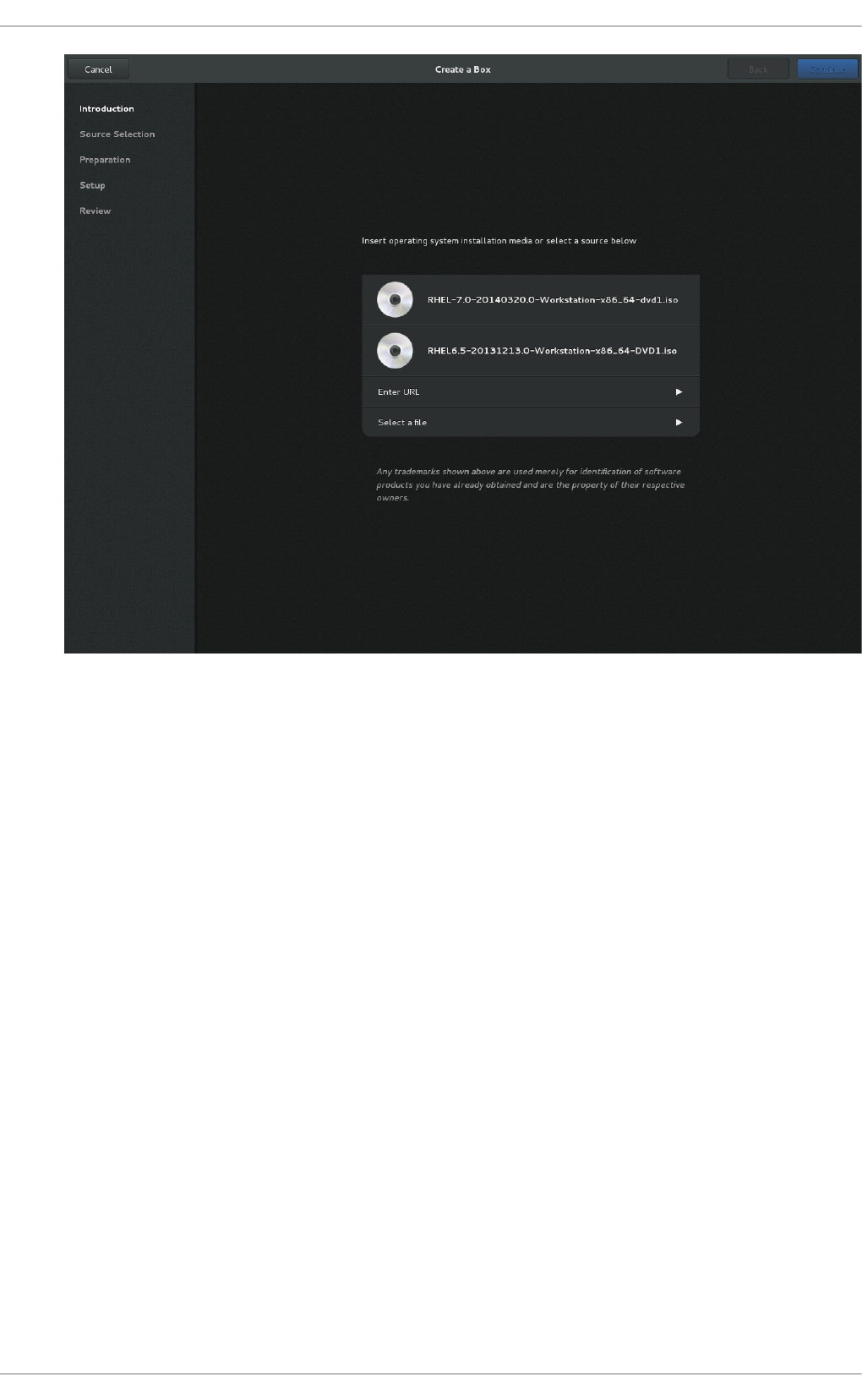

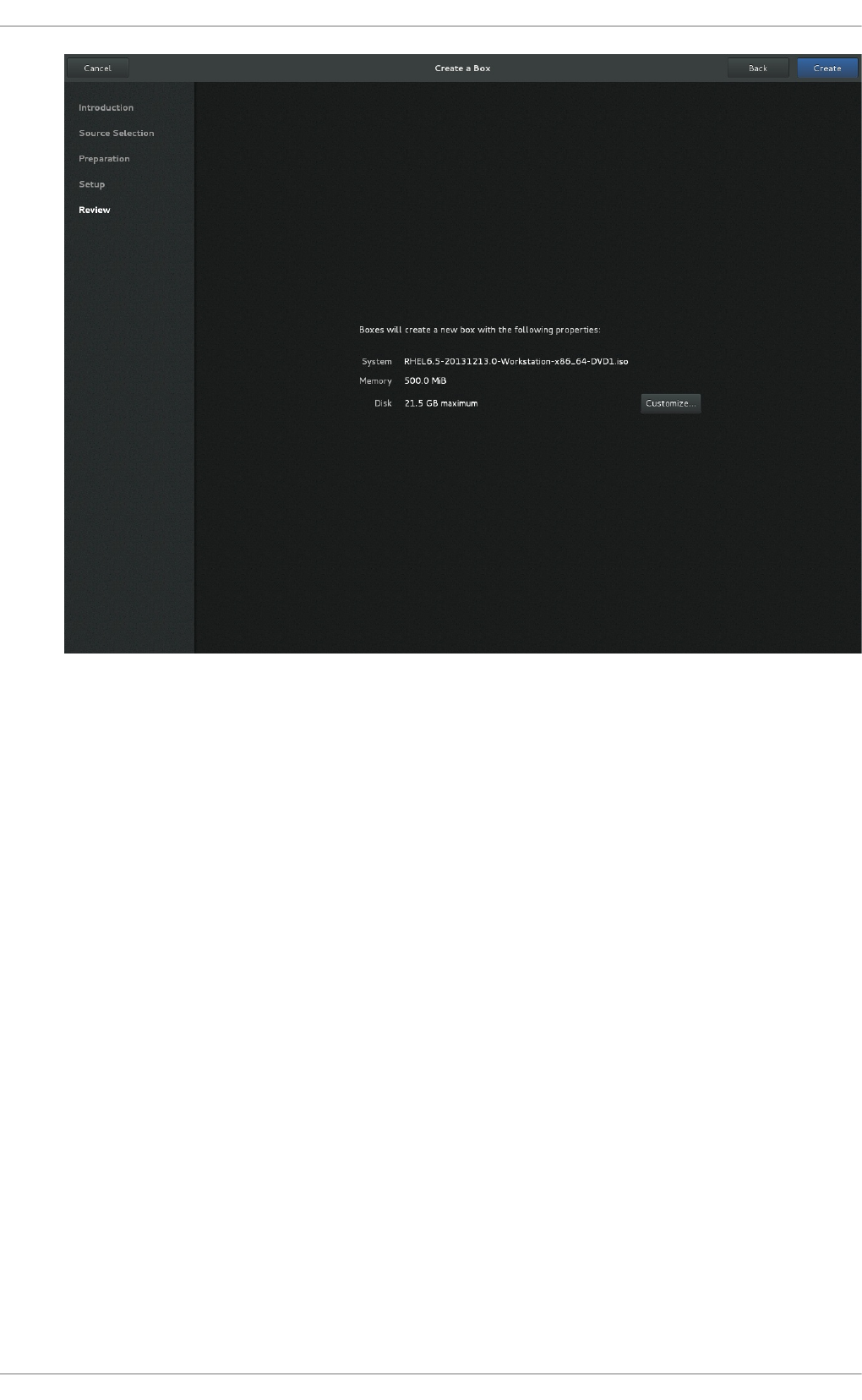

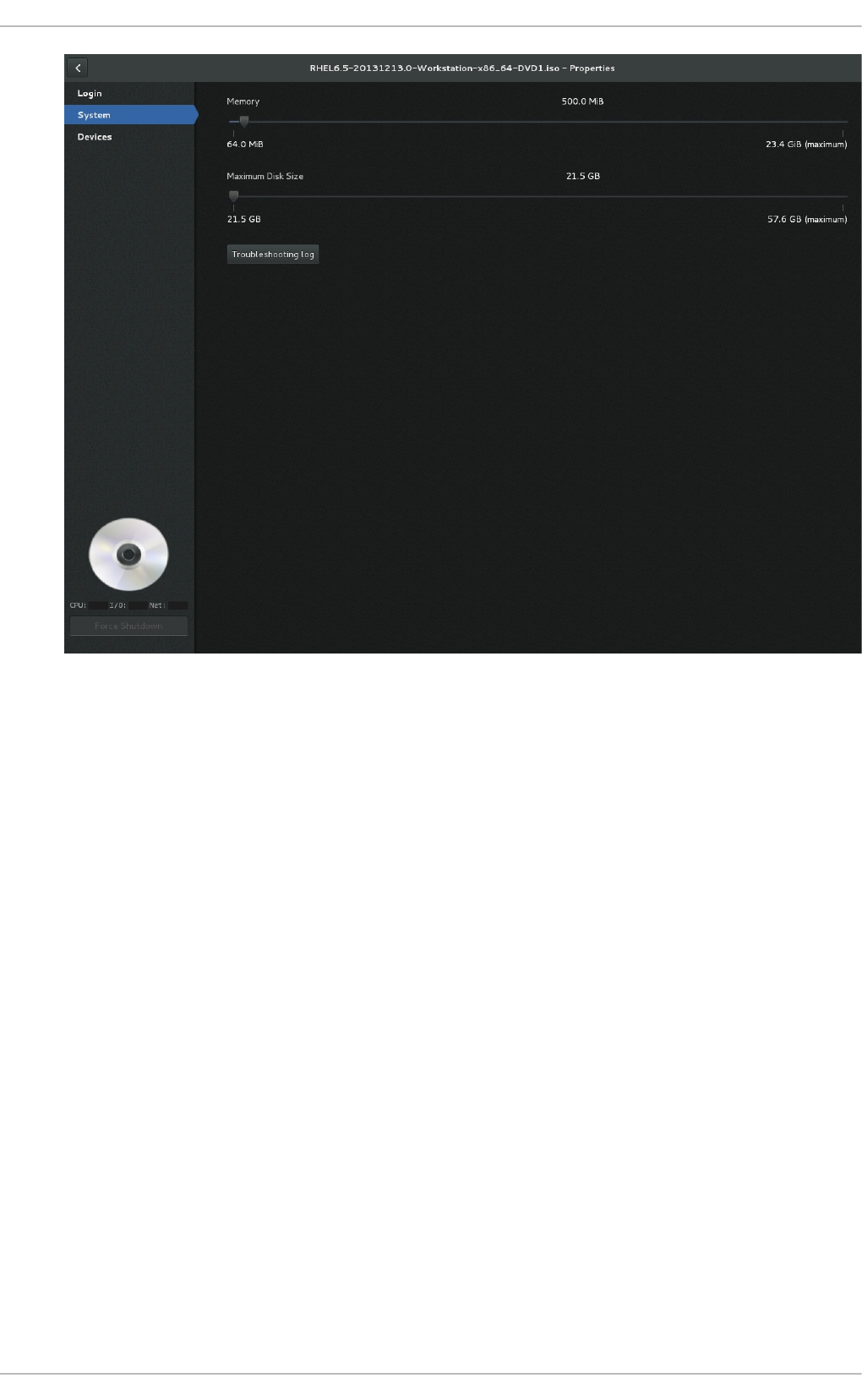

25.3. G NO ME Bo xes

Chapt er 2 6 . Manipulat ing t he domain XML

26 .1. G eneral info rmatio n and meta-d ata

26 .2. O p erating s ystem b o o ting

26 .3. SMBIO S s ystem info rmatio n

26 .4. CPU allo catio n

26 .5. CPU tuning

26 .6 . Memo ry b acking

26 .7. Memo ry tuning

26 .8 . Memo ry allo catio n

26 .9 . NUMA no d e tuning

26 .10 . Blo c k I/O tuning

26 .11. Reso urc e p artitio ning

26 .12. CPU mo d els and to p o lo g y

26 .13. Events co nfig uratio n

26 .14. Po wer Manag ement

26 .15. Hyp erviso r features

26 .16 . Time keep ing

26 .17. Timer element attrib utes

333

352

356

36 1

36 2

36 4

36 7

372

377

379

38 5

38 8

38 9

39 0

39 1

39 1

39 1

39 3

39 3

39 4

39 5

39 5

40 1

40 1

40 2

40 4

40 5

40 7

40 9

414

417

417

418

419

425

425

426

430

430

431

433

433

434

435

436

437

437

443

445

446

447

450

Virt ualizat ion Deployment and Administ rat ion G uide

4

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

26 .17. Timer element attrib utes

26 .18 . Devices

26 .19 . Sto rag e p o o ls

26 .20 . Sto rag e Vo lumes

26 .21. Security lab el

26 .22. A Samp le co nfig uratio n file

Part III. Appendices

Appendix A. T roublesh o o t ing

A.1. Deb ug g ing and tro ub les ho o ting to o ls

A.2. Prep aring fo r d isaster reco very

A.3. Creating virsh d ump files

A.4. Cap turing trac e d ata o n a co nstant b asis us ing the Sys temtap flig ht rec o rd er

A.5. kvm_s tat

A.6 . Tro ub les ho o ting with serial c o nso les

A.7. Virtualiz atio n lo g files

A.8 . Lo o p d evic e erro rs

A.9 . Live Mig ratio n Erro rs

A.10 . Enab ling Intel VT-x and AMD-V virtualizatio n hard ware extensio ns in BIO S

A.11. G enerating a new uniq ue MAC ad d res s

A.12. KVM netwo rking p erfo rmance

A.13. Wo rkaro und fo r creating external snap s ho ts with lib virt

A.14. Mis sing characters o n g uest co nso le with Jap anese keyb o ard

A.15. G ues t virtual machine fails to s hutd o wn

A.16 . Dis ab le SMART d isk mo nito ring fo r g ues t virtual machines

A.17. lib g ues tfs tro ub les ho o ting

A.18 . Co mmo n lib virt erro rs and tro ub les ho o ting

Appendix B. Virt ualizat io n rest rict ions

B.1. KVM restrictio ns

B.2. Ap p licatio n res trictio ns

B.3. O ther restrictio ns

B.4. Sto rag e sup p o rt

B.5. USB 3 / xHCI Sup p o rt

Appendix C. Addit ion al resources

C.1. O nline res o urces

C.2. Ins talled d o cumentatio n

Appendix D. Working wit h IO MMU G roups [1 ]

D.1. IO MMU O verview

D.2. A d eep -d ive into IO MMU g ro up s

D.3. Ho w to id entify and ass ig n IO MMU G ro up s

D.4. IO MMU strateg ies and case uses

Appendix E. Net KVM Driver Paramet ers

E.1. Co nfig urab le p arameters fo r NetKVM

Appendix F. Revision Hist ory

450

451

50 2

50 8

512

514

51 5

51 6

516

517

518

519

521

526

527

527

527

528

529

530

531

531

532

533

533

533

56 2

56 2

56 5

56 5

56 5

56 6

56 7

56 7

56 7

56 8

56 8

56 9

570

572

57 4

574

57 8

T able of Cont ent s

5

Part I. Deployment

Virt ualizat ion Deployment and Administ rat ion G uide

6

Chapter 1. System requirements

Virtualization is available with the KVM hypervisor for Red Hat Enterprise Linux 7 on the Intel 64 and

AMD64 architectures. This chapter lists system requirements for running virtual machines, also

referred to as VMs.

For information on installing the virtualization packages, see Chapter 2, Installing the virtualization

packages.

1.1. Host syst em requirement s

Min imu m h o st syst em req u iremen t s

6 GB free disk space.

2 GB RAM.

Reco mmen d ed syst em req uiremen t s

One core or thread for each virtualized CPU and one for the host.

2 GB of RAM, plus additional RAM for virtual machines.

6 GB disk space for the host, plus the required disk space for the virtual machine(s).

Most guest operating systems require at least 6 GB of disk space. Additional storage space for

each guest depends on their workload.

Swap sp ace

Swap space in Linux is used when the amount of physical memory (RAM) is full. If the system

needs more memory resources and the RAM is full, inactive pages in memory are moved to the

swap space. While swap space can help machines with a small amount of RAM, it should not be

considered a replacement for more RAM. Swap space is located on hard drives, which have a

slower access time than physical memory. The size of your swap partition can be calculated from

the physical RAM of the host. The Red Hat Customer Portal contains an article on safely and

efficiently determining the size of the swap partition:

https://access.redhat.com/site/solutions/15244.

When using raw image files, the total disk space required is equal to or greater than the sum of

the space required by the image files, the 6 GB of space required by the host operating system,

and the swap space for the guest.

Eq u at io n 1.1. C alcu lat in g req uired sp ace f o r gu est virt u al mach in es u sin g raw

imag es

total for raw format = images + hostspace + swap

For qcow images, you must also calculate the expected maximum storage requirements of the

guest (total for qcow format), as qcow and qcow2 images are able to grow as

required. To allow for this expansion, first multiply the expected maximum storage

Chapt er 1 . Syst em requirement s

7

requirements of the guest (expected maximum guest storage) by 1.01, and add to this

the space required by the host (host), and the necessary swap space (swap).

Eq u at io n 1.2. C alcu lat in g req uired sp ace f o r gu est virt u al mach in es u sin g

q co w imag es

total for qcow format = (expected maximum guest storage * 1.01) + host + swap

Guest virtual machine requirements are further outlined in Chapter 8, Overcommitting with KVM.

1.2. KVM hypervisor requirement s

The KVM hypervisor requires:

an Intel processor with the Intel VT-x and Intel 64 virtualization extensions for x86-based systems;

or

an AMD processor with the AMD-V and the AMD64 virtualization extensions.

Virtualization extensions (Intel VT-x or AMD-V) are required for full virtualization. Run the following

commands to determine whether your system has the hardware virtualization extensions, and that

they are enabled.

Pro ced ure 1.1. Verif yin g virt ualiz at io n ext en sio ns

1. Verif y th e CPU virt u aliz at io n ext en sio n s are availab le

Run the following command to verify the CPU virtualization extensions are available:

$ grep -E 'svm|vmx' /proc/cpuinfo

2. An alyz e t he o u t put

The following example output contains a vmx entry, indicating an Intel processor with the

Intel VT-x extension:

flags : fpu tsc msr pae mce cx8 vmx apic mtrr mca cmov pat

pse36 clflush

dts acpi mmx fxsr sse sse2 ss ht tm syscall lm constant_tsc pni

monitor ds_cpl

vmx est tm2 cx16 xtpr lahf_lm

The following example output contains an svm entry, indicating an AMD processor with

the AMD-V extensions:

flags : fpu tsc msr pae mce cx8 apic mtrr mca cmov pat pse36

clflush

mmx fxsr sse sse2 ht syscall nx mmxext svm fxsr_opt lm 3dnowext

3dnow pni cx16

lahf_lm cmp_legacy svm cr8legacy ts fid vid ttp tm stc

If the grep -E 'svm|vmx' /proc/cpuinfo command returns any output, the processor

contains the hardware virtualization extensions. In some circumstances, manufacturers

Virt ualizat ion Deployment and Administ rat ion G uide

8

disable the virtualization extensions in the BIOS. If the extensions do not appear, or full

virtualization does not work, see Procedure A.4, “ Enabling virtualization extensions in BIOS”

for instructions on enabling the extensions in your BIOS configuration utility.

3. En su re t h e K VM kern el mo d u les are lo ad ed

As an additional check, verify that the kvm modules are loaded in the kernel with the following

command:

# lsmod | grep kvm

If the output includes kvm_intel or kvm_amd, the kvm hardware virtualization modules are

loaded.

Note

The virsh utility (provided by the libvirt-client package) can output a full list of your system's

virtualization capabilities with the following command:

# virsh capabilities

1.3. KVM guest virt ual machine compat ibilit y

Red Hat Enterprise Linux 7 servers have certain support limits.

The following URLs explain the processor and memory amount limitations for Red Hat Enterprise

Linux:

For host systems: https://access.redhat.com/articles/rhel-limits

For the KVM hypervisor: https://access.redhat.com/articles/rhel-kvm-limits

The following URL lists guest operating systems certified to run on a Red Hat Enterprise Linux KVM

host:

https://access.redhat.com/articles/973133

Note

For additional information on the KVM hypervisor's restrictions and support limits, see

Appendix B, Virtualization restrictions.

1.4. Support ed guest CPU models

Every hypervisor has its own policy for which CPU features the guest will see by default. The set of

CPU features presented to the guest by the hypervisor depends on the CPU model chosen in the

guest virtual machine configuration.

Chapt er 1 . Syst em requirement s

9

1.4 .1. List ing t he guest CPU models

To view a full list of the CPU models supported for an architecture type, run the virsh cpu-models

<arch> command. For example:

$ virsh cpu-models x86_64

486

pentium

pentium2

pentium3

pentiumpro

coreduo

n270

core2duo

qemu32

kvm32

cpu64-rhel5

cpu64-rhel6

kvm64

qemu64

Conroe

Penryn

Nehalem

Westmere

SandyBridge

Haswell

athlon

phenom

Opteron_G1

Opteron_G2

Opteron_G3

Opteron_G4

Opteron_G5

$ virsh cpu-models ppc64

POWER7

POWER7_v2.1

POWER7_v2.3

POWER7+_v2.1

POWER8_v1.0

The full list of supported CPU models and features is contained in the cpu_map.xml file, located in

/usr/share/libvirt/:

# cat /usr/share/libvirt/cpu_map.xml

A guest's CPU model and features can be changed in the <cpu> section of the domain XML file. See

Section 26.12, “CPU models and topology” for more information.

The host model can be configured to use a specified feature set as needed. For more information, see

Section 26.12.1, “Changing the feature set for a specified CPU” .

Virt ualizat ion Deployment and Administ rat ion G uide

10

Chapter 2. Installing the virtualization packages

To use virtualization, the virtualization packages must be installed on your computer. Virtualization

packages can be installed either during the host installation sequence or after host installation using

the yum command and Subscription Manager.

The KVM hypervisor uses the default Red Hat Enterprise Linux kernel with the kvm kernel module.

2.1. Configuring a virt ualizat ion host during a new Red Hat Ent erprise

Linux 7 inst allat ion

This section covers installing virtualization tools and virtualization packages as part of a fresh Red

Hat Enterprise Linux installation.

Note

The Red Hat Enterprise Linux 7 Installation Guide covers installing Red Hat Enterprise Linux in

detail.

Pro ced ure 2.1. In st alling th e virt u aliz at io n p ackag e g ro u p

1. Lau n ch t he Red Hat En t erp rise Lin u x 7 in st allat io n pro g ram

Start an interactive Red Hat Enterprise Linux 7 installation from the Red Hat Enterprise Linux

Installation CD-ROM, DVD or PXE.

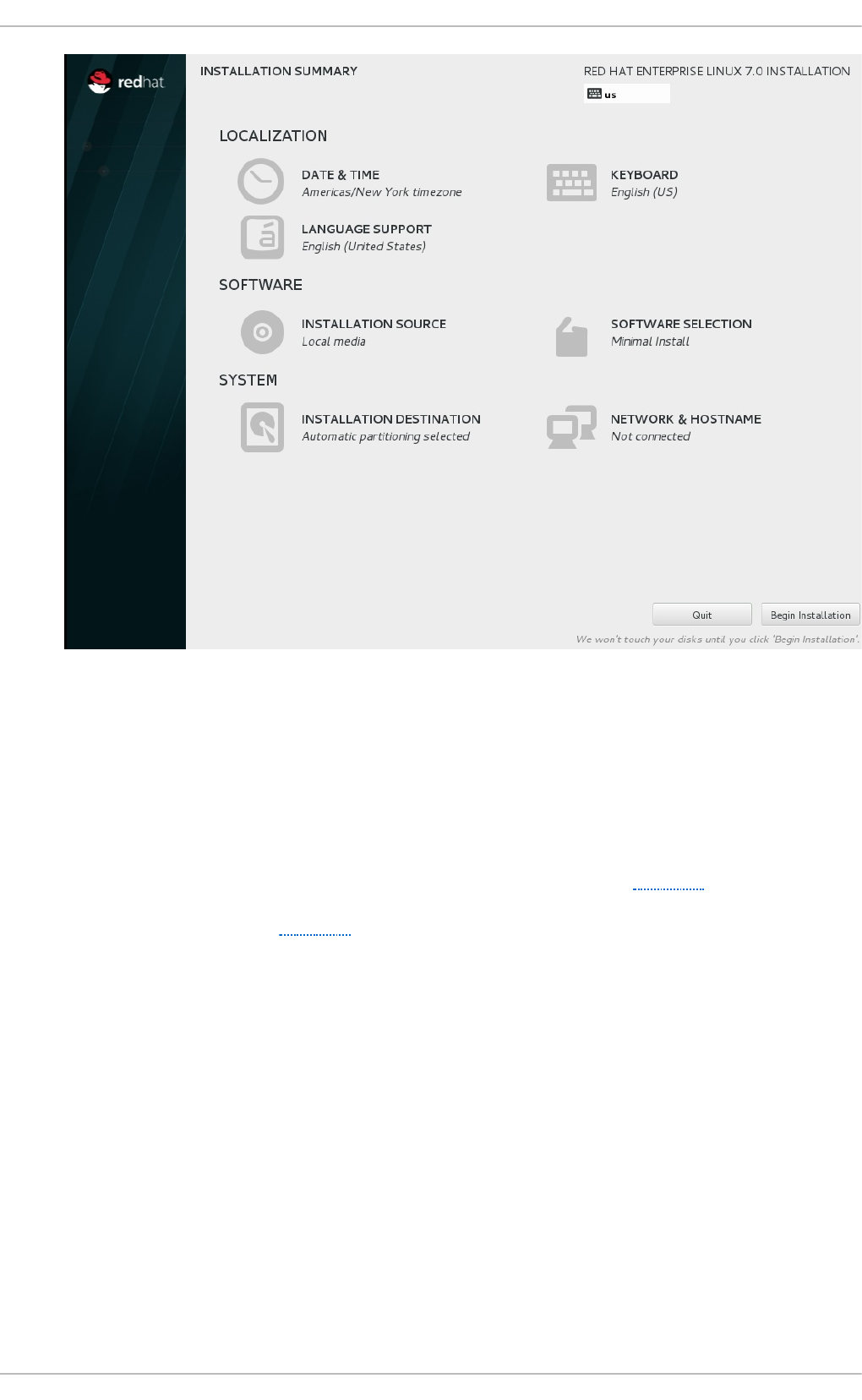

2. Co n t in ue in st allat io n up to so f tware select io n

Complete the other steps up to the software selection step. The Installation Summary

screen prompts the user to complete any steps still requiring attention.

Chapt er 2 . Inst alling t he virt ualiz at ion packages

11

Fig u re 2.1. T h e In st allat io n Su mmary screen

Software Selection defaults to Minimal Install. Open the Software Selection screen

to select the virtualization packages instead.

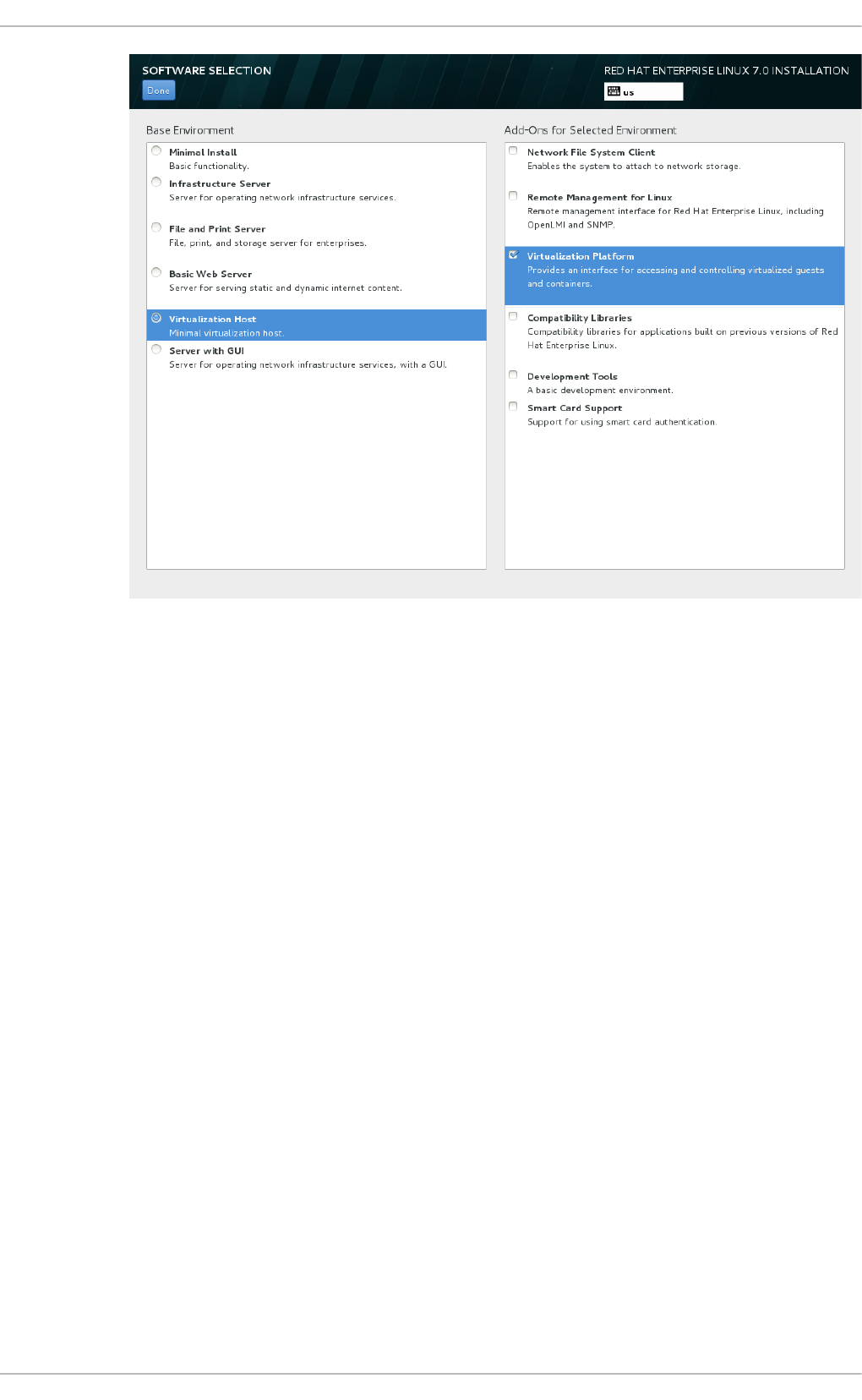

3. Select t h e server t ype an d packag e g ro u p s

Red Hat Enterprise Linux 7 has two available options for installing a virtualization host: a

minimal virtualization host with only the basic packages installed (Step 3.a), or a

virtualization host with packages installed to allow management of guests through a

graphical user interface (Step 3.b).

a.

Select in g a min imal virt u aliz at io n h o st

Select the Vi rtual i zati o n Ho st radio button under Base Environment, and

the Vi rtual i zati o n P l atfo rm checkbox under Add-Ons for Selected

Environment. This installs a basic virtualization environment which can be run with

virsh, or remotely over the network.

Virt ualizat ion Deployment and Administ rat ion G uide

12

Fig u re 2.2. Virt u aliz at io n Ho st select ed in th e so f t ware select ion screen

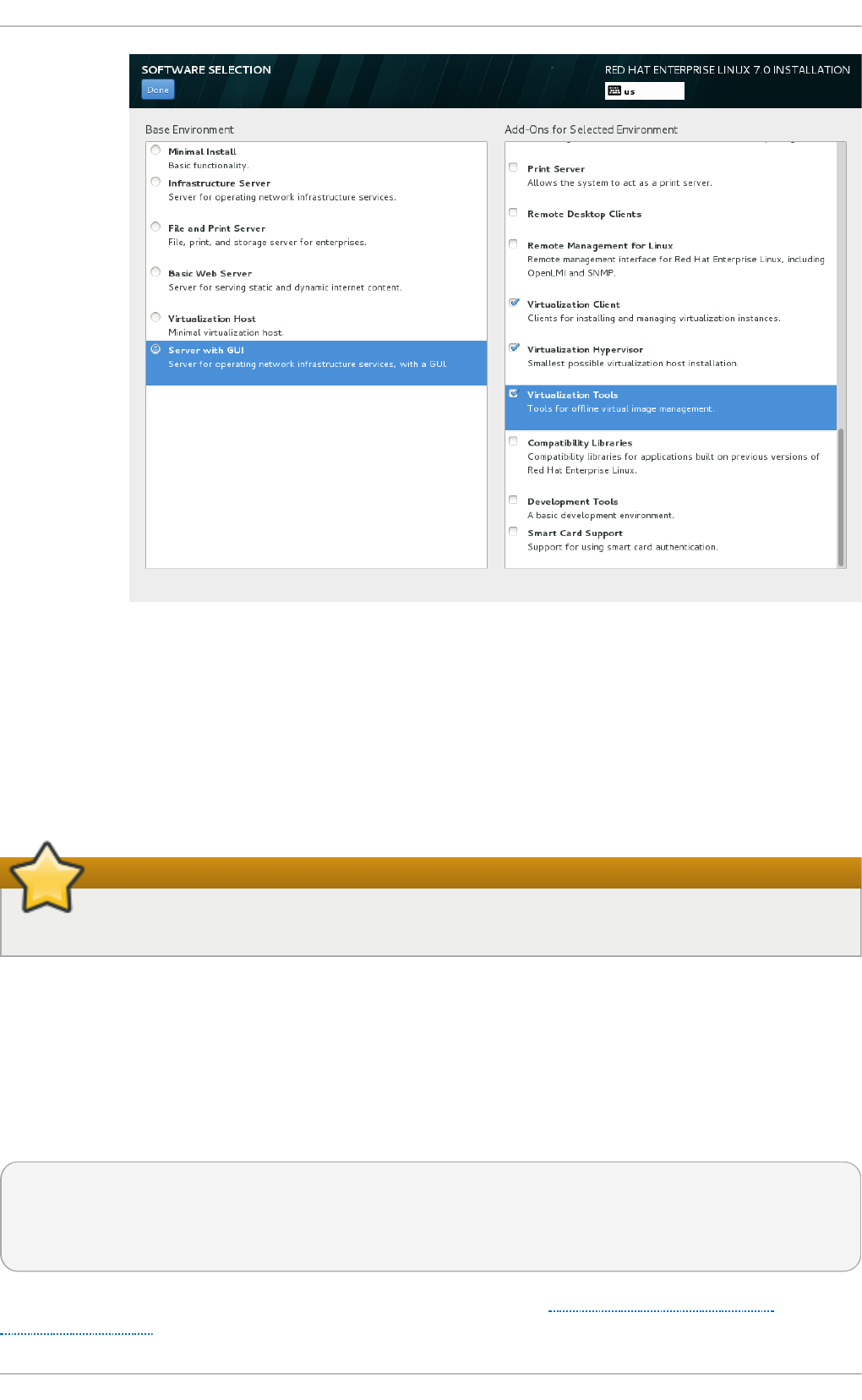

b.

Select in g a virt u aliz at io n h o st wit h a g rap h ical u ser in terf ace

Select the Server with GUI radio button under Base Environment, and the

checkboxes for Vi rtual i zati o n C l i ent, Virtualization Hypervisor, and

Vi rtual i zati o n T o o l s under Add-Ons for Selected Environment. This

installs a virtualization environment along with graphical tools for installing and

managing guest virtual machines.

Chapt er 2 . Inst alling t he virt ualiz at ion packages

13

Fig u re 2.3. Server wit h G UI select ed in t h e so f tware select io n screen

4. Fin aliz e in st allat io n

On the Installation Summary screen, complete the steps as necessary and click Beg i n

Instal l ati o n.

When the installation is complete, reboot the system.

Important

You require a valid virtualization entitlement to receive updates for the virtualization packages.

2.1.1. Inst alling KVM packages wit h Kickst art files

Kickstart files allow for large, automated installations without a user manually installing each

individual host system.

To use a Kickstart file to install Red Hat Enterprise Linux with the virtualization packages, append the

following package groups in the %packages section of your Kickstart file:

@virtualization-hypervisor

@virtualization-client

@virtualization-platform

@virtualization-tools

For more information about installing with Kickstart files, see the Red Hat Enterprise Linux 7

Installation Guide.

Virt ualizat ion Deployment and Administ rat ion G uide

14

2.2. Inst alling virt ualizat ion packages on an exist ing Red Hat

Ent erprise Linux syst em

This section describes the steps for installing the KVM hypervisor on an existing Red Hat Enterprise

Linux 7 system.

To install the packages, your machine must be registered and subscribed to the Red Hat Customer

Portal. To register via Red Hat Subscription Manager, run the subscription-manager register

command and follow the prompts. Alternatively, run the Red Hat Subscription Manager application

from Ap p licat io n s → Syst em T o o ls on the desktop to register.

If you do not have a valid Red Hat subscription, visit the Red Hat online store to obtain one. For more

information on registering and subscribing a system to the Red Hat Customer Portal, see

https://access.redhat.com/solutions/253273.

2.2.1. Inst alling t he virt ualiz at ion packages wit h yum

To use virtualization on Red Hat Enterprise Linux, you require at minimum the qemu-kvm, qemu-img,

and libvirt packages. These packages provide the user-level KVM emulator, disk image manager, and

virtualization management tools on the host Red Hat Enterprise Linux system.

The libvirt package provides the server and host-side libraries for interacting with hypervisors and

host systems, and the l i bvi rtd daemon that handles the library calls, manages virtual machines

and controls the hypervisor.

To install these packages, run the following command:

# yum install qemu-kvm qemu-img libvirt

Several additional virtualization management packages are also available and are recommended

when using virtualization:

Reco mmen d ed virt u aliz at io n p ackag es

virt-install

This package provides the vi rt-i nstal l command for creating virtual machines from the

command line.

libvirt-python

The libvirt-python package contains a module that permits applications written in the Python

programming language to use the interface supplied by the libvirt API.

virt-manager

virt-manager, also known as Virt u al Mach in e Man ag er, provides a graphical tool for

administering virtual machines. It uses the libvirt-client library as the management API.

libvirt-client

The libvirt-client package provides the client-side APIs and libraries for accessing libvirt

servers. The libvirt-client package includes the virsh command line tool to manage and

control virtual machines and hypervisors from the command line or a special virtualization

shell.

Chapt er 2 . Inst alling t he virt ualiz at ion packages

15

Install all of these recommended virtualization packages with the following command:

# yum install virt-install libvirt-python virt-manager python-virtinst

libvirt-client

2.2.2. Inst alling virt ualiz at ion package groups

The virtualization packages can also be installed from package groups. The following table

describes the virtualization package groups and what they provide.

Note

Note that the qemu-img package is installed as a dependency of the Vi rtual i zati o n

package group if it is not already installed on the system. It can also be installed manually

with the yum install qemu-img command as described previously.

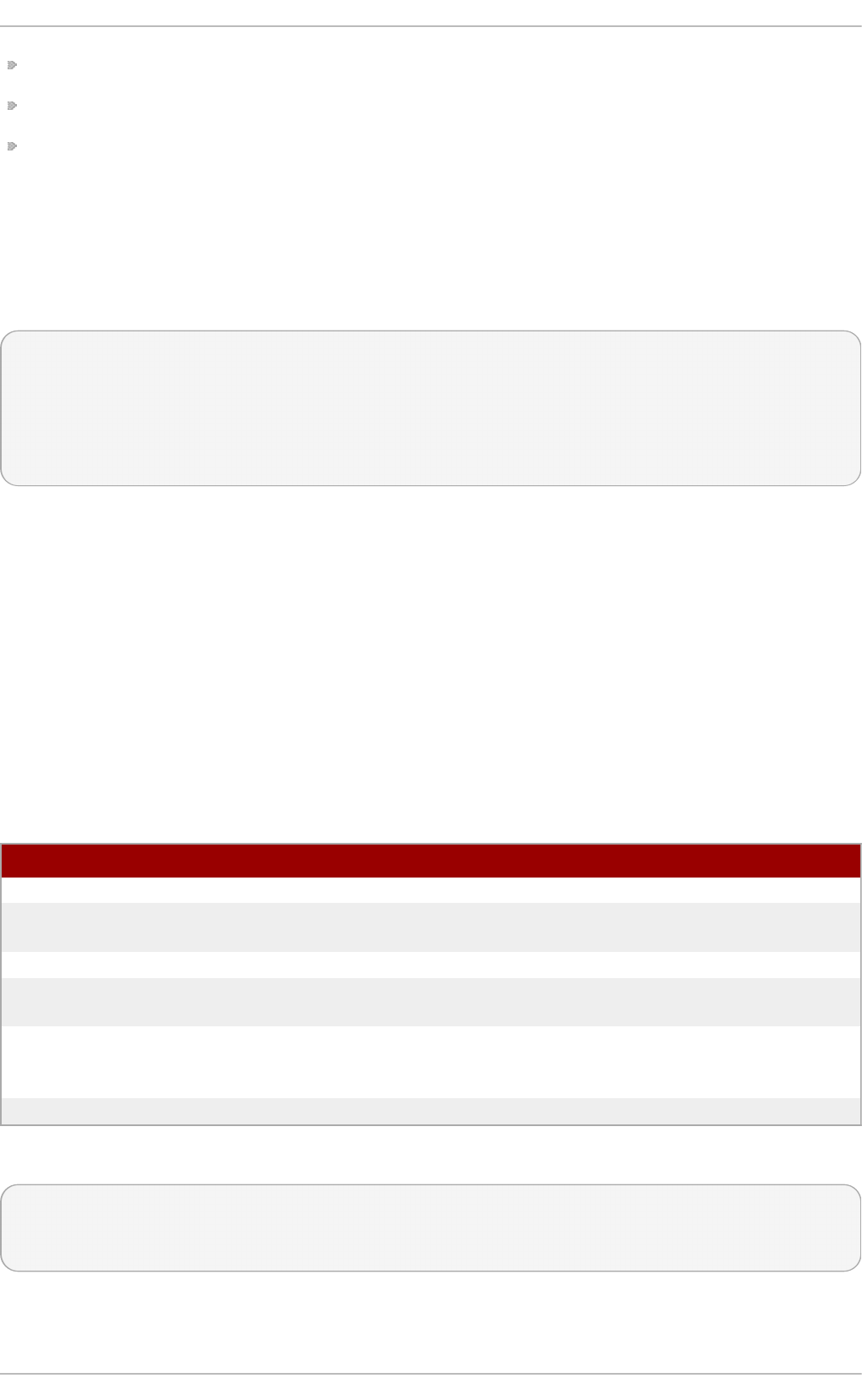

T ab le 2.1. Virt u aliz at io n Packag e G ro u p s

Packag e G ro u p Descrip t io n Man d at o ry

Packages

O p t io n al Packag es

Vi rtual i zati o n

Hypervisor

Smallest possible

virtualization host

installation

libvirt, qemu-kvm qemu-kvm-tools

Vi rtual i zati o n

C l i ent

Clients for installing

and managing

virtualization

instances

gnome-boxes, virt-

install, virt-manager,

virt-viewer

virt-top, libguestfs-

tools, libguestfs-tools-c

Vi rtual i zati o n

P l atfo rm

Provides an interface

for accessing and

controlling virtual

machines and

containers

libvirt, libvirt-client, virt-

who

fence-virtd-libvirt,

fence-virtd-multicast,

fence-virtd-serial,

libvirt-cim, libvirt-java,

libvirt-snmp, perl-Sys-

Virt

Vi rtual i zati o n

Tools

Tools for offline virtual

image management

libguestfs libguestfs-java,

libguestfs-tools,

libguestfs-tools-c

To install a package group, run the yum groupinstall <package group> command. For

instance, to install the Vi rtual i zati o n T o o l s package group, run:

# yum groupinstall "Virtualization Tools"

2.3. Regist ering t he hypervisor and virt ual machine

Red Hat Enterprise Linux 6 and 7 require that every guest virtual machine is mapped to a specific

hypervisor in order to ensure that every guest is allocated the same level of subscription service. To

do this you need to install a subscription agent that automatically detects all guest Virtual Machines

(VMs) on each KVM hypervisor that is installed and registered, which in turn will create a mapping file

that sits on the host. This mapping file ensures that all guest VMs receive the following benefits:

Subscriptions specific to virtual systems are readily available and can be applied to all of the

Virt ualizat ion Deployment and Administ rat ion G uide

16

associated guest VMs

All subscription benefits that can be inherited from the hypervisor are readily available and can

be applied to all of the associated guest VMs.

Note

The information provided in this chapter is specific to Red Hat Enterprise Linux subscriptions

only. If you also have a Red Hat Enterprise Virtualization subscription, or a Red Hat Satellite

subscription, you should also consult the virt-who information provided with those

subscriptions.

2.3.1. Inst alling virt -who on t he host physical machine

1. Reg ist er t h e KVM h yp erviso r

Register the KVM Hypervisor by running the subscription-manager register

[options] command in a terminal as the root user on the host physical machine. More

options are available using the # subscription-manager register --help menu. In

cases where you are using a username and password, use the credentials that are known to

the subscription manager. If this is your very first time subscribing and you do not have a

user account, contact customer support. For example to register the VM as 'admin' with

'secret' as a password, you would send the following command:

[root@rhel-server ~]# subscription-manager register --

username=admin --password=secret --auto-attach --type=hypervisor

2. In st all the virt - wh o p ackag es

Install the virt-who packages, by running the following command in a terminal as root on the

host physical machine:

[root@rhel-server ~]# yum install virt-who

3. Creat e a virt - wh o co n f igurat io n f ile

Add a configuration file in the /etc/virt-who.d/ directory. It does not matter what the

name of the file is, but you should give it a name that makes sense and the file must be

located in the /etc/virt-who.d/ directory. Inside that file add the following snippet and

remember to save the file before closing it.

[libvirt]

type=libvirt

4. St art t h e virt - wh o service

Start the virt-who service by running the following command in a terminal as root on the host

physical machine:

[root@virt-who ~]# systemctl start virt-who.service

[root@virt-who ~]# systemctl enable virt-who.service

Chapt er 2 . Inst alling t he virt ualiz at ion packages

17

5. Co n f irm virt - wh o service is receivin g g u est in f ormat io n

At this point, the virt-who service will start collecting a list of domains from the host. Check the

/var/log/rhsm/rhsm.log file on the host physical machine to confirm that the the file

contains a list of the guest VMs. For example:

2015-05-28 12:33:31,424 DEBUG: Libvirt domains found: [{'guestId':

'58d59128-cfbb-4f2c-93de-230307db2ce0', 'attributes': {'active': 0,

'virtWhoType': 'libvirt', 'hypervisorType': 'QEMU'}, 'state': 5}]

Pro ced ure 2.2. Man ag in g t h e su b scrip t io n on the cu st o mer p o rt al

1. Su b scrib in g t h e h yp erviso r

As the virtual machines will be receiving the same subscription benefits as the hypervisor, it is

important that the hypervisor has a valid subscription and that the subscription is available

for the VMs to use.

a. Lo g in t o t he cu st omer p o rt al

Login to the Red Hat customer portal https://access.redhat.com/ and click the

Subscriptions button at the top of the page.

b. Click t h e Syst ems lin k

In the Subscriber Inventory section (towards the bottom of the page), click

Systems link.

c. Select the h yp erviso r

On the Systems page, there is a table of all subscribed systems. Click on the name of

the hypervisor (localhost.localdomain for example). In the details page that opens,

click Attach a subscription and select all the subscriptions listed. Click Attach

Selected. This will attach the host's physical subscription to the hypervisor so that

the guests can benefit from the subscription.

2. Su b scrib ing t h e g u est virt u al mach in es - f irst t ime u se

This step is for those who have a new subscription and have never subscribed a guest virtual

machine before. If you are adding virtual machines, skip this step. To consume the

subscription assigned to the hypervisor profile on the machine running the virt-who service,

auto subscribe by running the following command in a terminal, on the guest virtual machine

as root.

[root@virt-who ~]# subscription-manager attach --auto

3. Su b scrib ing ad d it io n al g u est virt ual mach in es

If you just subscribed a for the first time, skip this step. If you are adding additional virtual

machines, it should be noted that running this command will not necessarily re-attach the

same pools to the hypervisor. This is because removing all subscriptions then allowing auto

attach to resolve what is necessary for a given guest virtual machine may result in different

subscriptions consumed than before. This may not have any effect on your system, but it is

something you should be aware about. If you used a manual attachment procedure to attach

the virtual machine, which is not described below, you will need to re-attach those virtual

machines manually as the auto-attach will not work. Use the following command as root in a

Virt ualizat ion Deployment and Administ rat ion G uide

18

terminal to first remove the subscriptions for the old guests and then use the auto-attach to

attach subscriptions to all the guests. Run these commands on the guest virtual machine.

[root@virt-who ~]# subscription-manager remove --all

[root@virt-who ~]# subscription-manager attach --auto

4. Co n f irm su bscrip t io ns are at tach ed

Confirm that the subscription is attached to the hypervisor by running the following command

as root in a terminal on the guest virtual machine:

[root@virt-who ~]# subscription-manager list --consumed

Output similar to the following will be displayed. Pay attention to the Subscription Details. It

should say 'Subscription is current'.

[root@virt-who ~]# subscription-manager list --consumed

+-------------------------------------------+

Consumed Subscriptions

+-------------------------------------------+

Subscription Name: Awesome OS with unlimited virtual guests

Provides: Awesome OS Server Bits

SKU: awesomeos-virt-unlimited

Contract: 0

Account: 12331131231

Serial: 7171985151317840309

Pool ID: 2c91808451873d3501518742f556143d

Provides Management: No

Active: True

Quantity Used: 1

Service Level:

Service Type:

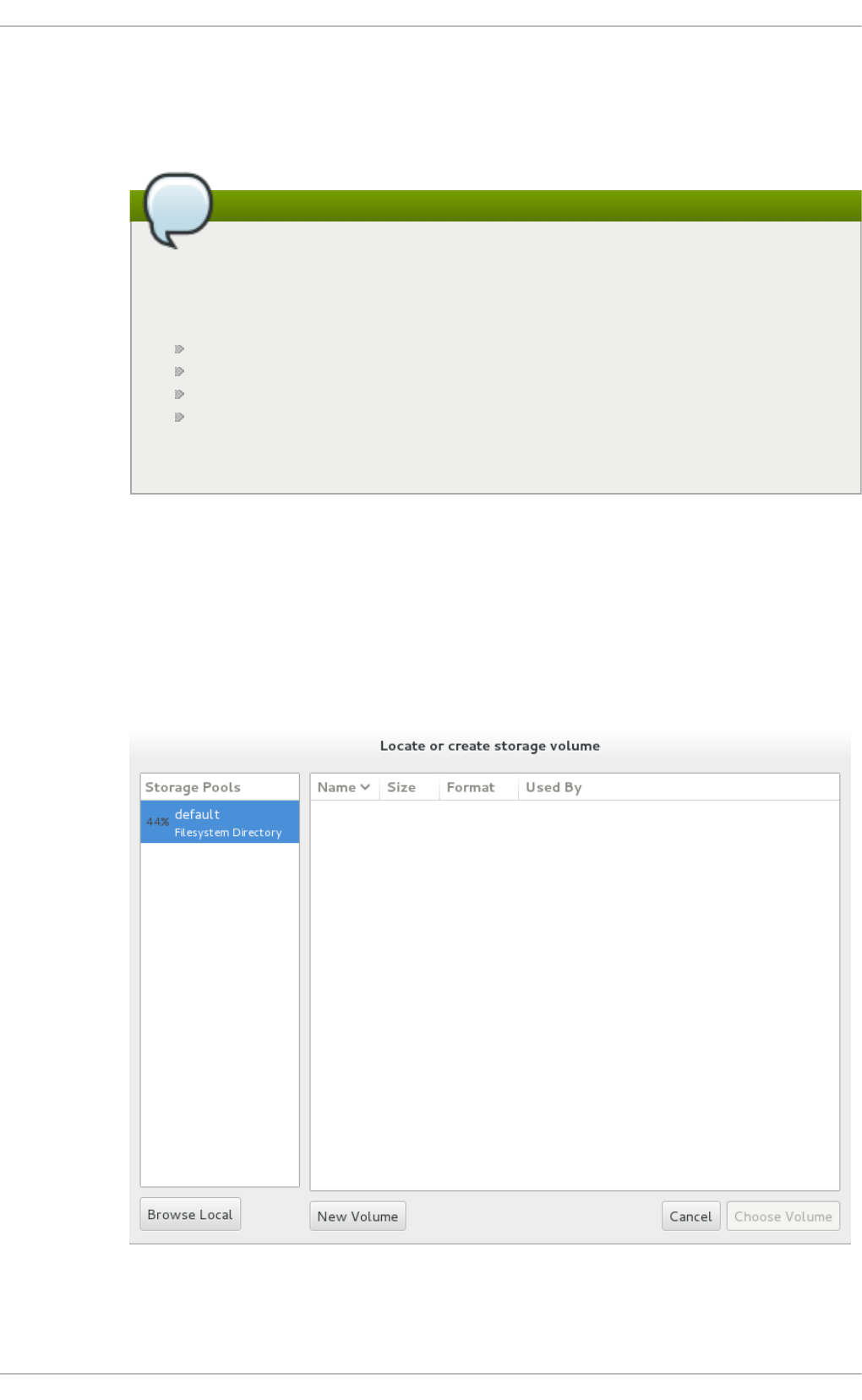

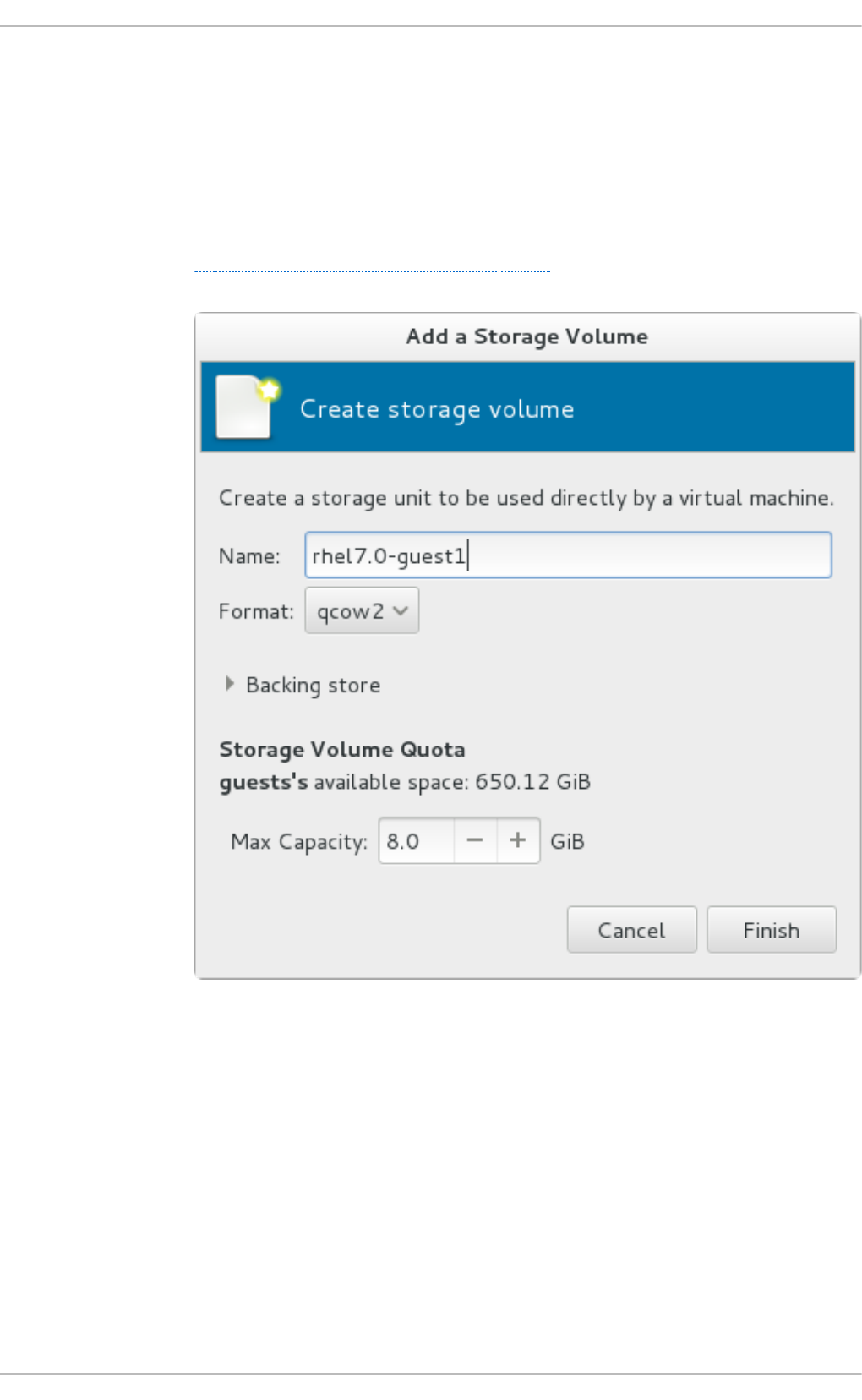

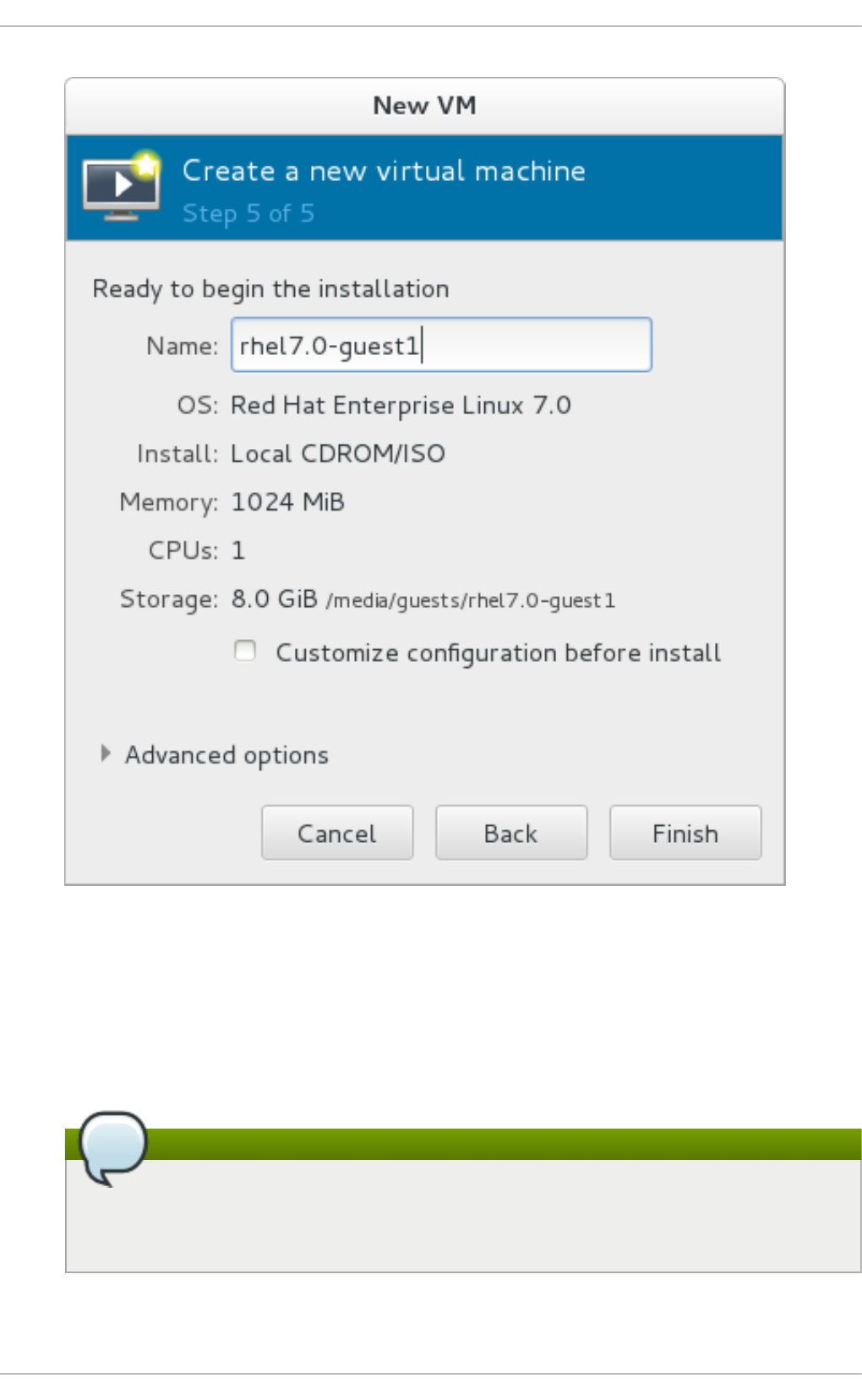

Status Details: Subscription is current