Ruby And Mongo DB Web Development Beginner's Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 332 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover

- Copyright

- Credits

- About the Author

- Acknowledgement

- About the Reviewers

- www.PacktPub.com

- Table of Contents

- Preface

- Chapter 1: Installing MongoDB and Ruby

- Installing Ruby

- Installing MongoDB

- Configuring the MongoDB server

- Starting MongoDB

- Stopping MongoDB

- The MongoDB CLI

- Installing Rails/Sinatra

- Summary

- Chapter 2: Diving Deep into MongoDB

- Creating documents

- Time for action – creating our first document

- Using MongoDB embedded documents

- Time for action – embedding reviews and votes

- Using MongoDB document relationships

- Time for action – creating document relations

- Comparing MongoDB versus SQL syntax

- Using Map/Reduce instead of join

- Time for action – writing the map function for calculating vote statistics

- Time for action – writing the reduce function to process emitted information

- Understanding the Ruby perspective

- Time for action – creating the project

- Time for action – start your engines

- Time for action – configuring Mongoid

- Time for action – planning the object schema

- Time for action – putting it all together

- Time for action – adding reviews to books

- Time for action – embedding Lease and Purchase models

- Time for action – writing the map function to calculate ratings

- Time for action – writing the reduce function to process the emitted results

- Time for action – working with Map/Reduce using Ruby

- Summary

- Chapter 3: MongoDB Internals

- Understanding Binary JSON

- What is ObjectId?

- Documents and collections

- JavaScript and MongoDB

- Time for action – writing our own custom functions in MongoDB

- Ensuring write consistency or "read your writes"

- Global write lock

- Transactional support in MongoDB

- Time for action – implementing optimistic locking

- Why are there no joins in MongoDB?

- Summary

- Chapter 4: Working Out Your Way with Queries

- Searching by fields in a document

- Time for action – searching by a string value

- Time for action – fetching only for specific fields

- Time for action – skipping documents and limiting our search results

- Time for action – finding books by name or publisher

- Time for action – finding the highly ranked books

- Searching inside arrays

- Time for action – searching inside reviews

- Searching inside hashes

- Searching inside embedded documents

- Searching with regular expressions

- Time for action – using regular expression searches

- Summary

- Chapter 5: Ruby DataMappers: Ruby and MongoDB Go Hand in Hand

- Why do we need Ruby DataMappers

- Time for action – using mongo gem

- The Ruby DataMappers for MongoDB

- Setting up DataMappers

- Time for action – configuring MongoMapper

- Time for action – setting up Mongoid

- Creating, updating, and destroying documents

- Time for action – creating and updating objects

- Using finder methods

- Using MongoDB criteria

- Time for action – fetching using the where criterion

- Understanding model relationships

- Time for action – relating models

- Time for action – categorizing books

- Time for action – adding book details

- Time for action – managing the driver entities

- Time for action – creating vehicles using basic polymorphism

- Using embedded objects

- Time for action – creating embedded objects

- Reverse embedded relations in Mongoid

- Time for action – using embeds_one without specifying embedded_in

- Time for action – using embeds_many without specifying embedded_in

- Understanding embedded polymorphism

- Time for action – adding licenses to drivers

- Time for action – insuring drivers

- Choosing whether to embed or to associate documents

- Mongoid or MongoMapper – the verdict

- Summary

- Chapter 6: Modeling Ruby with Mongoid

- Developing a web application with Mongoid

- Time for action – setting up a Rails project

- Time for action – using Sinatra professionally

- Defining attributes in models

- Time for action – adding dynamic fields

- Time for action – localizing fields

- Using arrays and hashes in models

- Defining relations in models

- Time for action – configuring the many-to-many relation

- Time for action – setting up the following and followers relationship

- Time for action – setting up cyclic relations

- Managing changes in models

- Time for action – changing models

- Mixing in Mongoid modules

- Time for action – getting paranoid

- Time for action – including a version

- Summary

- Chapter 7: Achieving High Performance on Your Ruby Application with MongoDB

- Profiling MongoDB

- Time for action – enabling profiling for MongoDB

- Using the explain function

- Time for action – explaining a query

- Using covered indexes

- Time for action – using covered indexes

- Other MongoDB performance tuning techniques

- Understanding web application performance

- Optimizing our code for performance

- Optimizing and tuning the web application stack

- Summary

- Chapter 8: Rack, Sinatra, Rails, and

MongoDB – Making Use of them All

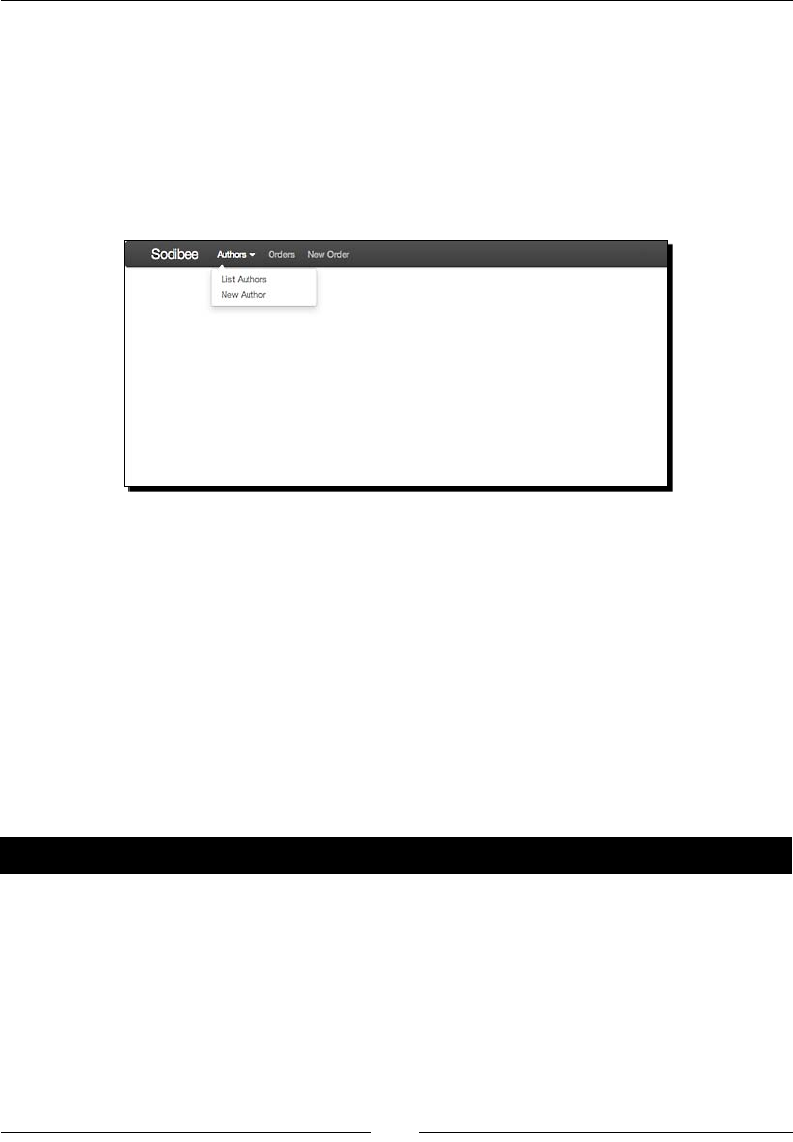

- Revisiting Sodibee

- The Rails way

- Time for action – modeling the Author class

- Time for action – writing the Book, Category and Address models

- Time for action – modeling the Order class

- Time for action – configuring routes

- Time for action – writing the AuthorsController

- Time for action – designing the layout

- Time for action – listing authors

- Time for action – adding new authors and books

- The Sinatra way

- Time for action – setting up Sinatra and Rack

- Testing and automation using RSpec

- Time for action – installing RSpec

- Time for action – sporking it

- Documenting code using YARD

- Summary

- Chapter 9: Going Everywhere – Geospatial Indexing with MongoDB

- What is geolocation

- Identifying the exact geolocation

- Storing coordinates in MongoDB

- Time for action – geocoding the Address model

- Time for action – saving geolocation coordinates

- Time for action – using geocoder for storing coordinates

- Firing geolocation queries

- Time for action – finding nearby addresses

- Time for action – firing near queries in Mongoid

- Summary

- Chapter 10: Scaling MongoDB

- High availability and failover via replication

- Time for action – setting up the master/slave replication

- Time for action – implementing replica sets

- Implementing replica sets for Sodibee

- Time for action – configuring replica sets for Sodibee

- Implementing sharding

- Time for action – setting up the shards

- Time for action – starting the config server

- Time for action – setting up mongos

- Implementing Map/Reduce

- Time for action – planning the Map/Reduce functionality

- Time for action – Map/Reduce via the mongo console

- Time for action – Map/Reduce via Ruby

- Time for action – iterating Ruby objects

- Summary

- Pop Quiz Answers

- Index

Ruby and MongoDB

Web Development

Beginner's Guide

Create dynamic web applicaons by combining

the power of Ruby and MongoDB

Gautam Rege

BIRMINGHAM - MUMBAI

Ruby and MongoDB Web Development Beginner's Guide

Copyright © 2012 Packt Publishing

All rights reserved. No part of this book may be reproduced, stored in a retrieval system,

or transmied in any form or by any means, without the prior wrien permission of the

publisher, except in the case of brief quotaons embedded in crical arcles or reviews.

Every eort has been made in the preparaon of this book to ensure the accuracy of the

informaon presented. However, the informaon contained in this book is sold without

warranty, either express or implied. Neither the author, nor Packt Publishing, and its dealers

and distributors will be held liable for any damages caused or alleged to be caused directly

or indirectly by this book.

Packt Publishing has endeavored to provide trademark informaon about all of the

companies and products menoned in this book by the appropriate use of capitals.

However, Packt Publishing cannot guarantee the accuracy of this informaon.

First published: July 2012

Producon Reference: 1180712

Published by Packt Publishing Ltd.

Livery Place

35 Livery Street

Birmingham B3 2PB, UK.

ISBN 978-1-84951-502-3

www.packtpub.com

Cover Image by Asher Wishkerman (wishkerman@hotmail.com)

Credits

Author

Gautam Rege

Reviewers

Bob Chesley

Ayan Dave

Michael Kohl

Srikanth AD

Acquision Editor

Karkey Pandey

Lead Technical Editor

Dayan Hyames

Technical Editor

Prashant Salvi

Copy Editors

Alda Paiva

Laxmi Subramanian

Project Coordinator

Leena Purkait

Proofreader

Linda Morris

Indexer

Hemangini Bari

Graphics

Valenna D'silva

Manu Joseph

Producon Coordinator

Prachali Bhiwandkar

Cover Work

Prachali Bhiwandkar

About the Author

Gautam Rege has over twelve years of experience in soware development. He is

a Computer Engineer from Pune Instute of Computer Technology, Pune, India. Aer

graduang in 2000, he worked in various Indian soware development companies unl

2002, aer which, he seled down in Veritas Soware (now Symantec). Aer ve years

there, his urge to start his own company got the beer of him and he started Josh Soware

Private Limited along with his long me friend Sethupathi Asokan, who was also in Veritas.

He is currently the Managing Director at Josh Soware Private Limited. Josh in Hindi

(his mother tongue) means "enthusiasm" or "passion" and these are the qualies that the

company culture is built on. Josh Soware Private Limited works exclusively in Ruby and

Ruby related technologies, such as Rails – a decision Gautam and Sethu (as he is lovingly

called) took in 2007 and it has paid rich dividends today!

Acknowledgement

I would like to thank Sethu, my co-founder at Josh, for ensuring that my focus was on the

book, even during the hecc acvies at work. Thanks to Sash Talim, who encouraged

me to write this book and Sameer Tilak, for providing me with valuable feedback while

wring this book! Big thanks to Michael Kohl, who was of great help in ensuring that every

ny technical detail was accurate and rich in content. I have become "technically mature"

because of him!

The book would not have been completed without the posive and uncondional support

from my wife, Vaibhavi and daughter, Swara, who tolerated a lot of busy weekends and late

nights where I was toiling away on the book. Thank you so much!

Last, but not the least, a big thank you to Karkey, Leena, Dayan, Ayan, Prashant, and

Vrinda from Packt, who ensured that everything I did was in order and up to the mark.

About the Reviewers

Bob Chesley is a web and database developer of around twenty years currently concentrang

on JavaScript cross plaorm mobile applicaons and SaaS backend applicaons that they

connect to. Bob is also a small boat builder and sailor, enjoying the green waters of the Tampa

Bay area. He can be contacted via his web site (www.nhsoftwerks.com) or via his blog

(www.cfmeta.com) or by email at bob.chesley@nhsoftwerks.com.

Ayan Dave is a soware engineer with eight years of experience in building and delivering

high quality applicaons using languages and components in JVM ecosystem. He is passionate

about soware development and enjoys exploring open source projects. He is enthusiasc

about Agile and Extreme Programming and frequently advocates for them. Over the years he

has provided consulng services to several organizaons and has played many dierent roles.

Most recently he was the "Architectus Oryzus" for a small project team with big ideas and

subscribes to the idea that running code is the system of truth.

Ayan has a Master's degree in Computer Engineering from the University of Houston - Clear

Lake and holds PMP, PSM-1 and OCMJEA cercaons. He is also a speaker on various

technical topics at local user groups and community events. He currently lives in Columbus,

Ohio and works with Quick Soluons Inc. In the digital world he can be found at

http://daveayan.com.

Michael Kohl got interested in programming, and the wider IT world, at the young age of

12. Since then, he worked as a systems administrator, systems engineer, Linux consultant,

and soware developer, before crossing over into the domain of IT security where he

currently works. He's a programming language enthusiast who's especially enamored with

funconal programming languages, but also has a long-standing love aair with Ruby that

started around 2003. You can nd his musings online at http://citizen428.net.

www.PacktPub.com

Support les, eBooks, discount offers and more

You might want to visit www.PacktPub.com for support les and downloads related to

your book.

Did you know that Packt oers eBook versions of every book published, with PDF and ePub

les available? You can upgrade to the eBook version at www.PacktPub.com and as a print

book customer, you are entled to a discount on the eBook copy. Get in touch with us at

service@packtpub.com for more details.

At www.PacktPub.com, you can also read a collecon of free technical arcles, sign up for a

range of free newsleers and receive exclusive discounts and oers on Packt books and eBooks.

http://PacktLib.PacktPub.com

Do you need instant soluons to your IT quesons? PacktLib is Packt's online digital book

library. Here, you can access, read and search across Packt's enre library of books.

Why Subscribe?

Fully searchable across every book published by Packt

Copy and paste, print and bookmark content

On demand and accessible via web browser

Free Access for Packt account holders

If you have an account with Packt at www.PacktPub.com, you can use this to access

PacktLib today and view nine enrely free books. Simply use your login credenals for

immediate access.

Table of Contents

Preface 1

Chapter 1: Installing MongoDB and Ruby 11

Installing Ruby 12

Using RVM on Linux or Mac OS 12

The RVM games 16

The Windows saga 17

Using rbenv for installing Ruby 17

Installing MongoDB 18

Conguring the MongoDB server 19

Starng MongoDB 19

Stopping MongoDB 21

The MongoDB CLI 21

Understanding JavaScript Object Notaon (JSON) 21

Connecng to MongoDB using Mongo 22

Saving informaon 22

Retrieving informaon 23

Deleng informaon 24

Exporng informaon using mongoexport 24

Imporng data using mongoimport 25

Managing backup and restore using mongodump and mongorestore 25

Saving large les using mongoles 26

bsondump 28

Installing Rails/Sinatra 28

Summary 29

Chapter 2: Diving Deep into MongoDB 31

Creang documents 32

Time for acon – creang our rst document 32

NoSQL scores over SQL databases 33

Using MongoDB embedded documents 34

Table of Contents

[ ii ]

Time for acon – embedding reviews and votes 35

Fetching embedded objects 36

Using MongoDB document relaonships 36

Time for acon – creang document relaons 37

Comparing MongoDB versus SQL syntax 38

Using Map/Reduce instead of join 40

Understanding funconal programming 40

Building the map funcon 40

Time for acon – wring the map funcon for calculang vote stascs 41

Building the reduce funcon 41

Time for acon – wring the reduce funcon to process emied informaon 42

Understanding the Ruby perspecve 43

Seng up Rails and MongoDB 43

Time for acon – creang the project 43

Understanding the Rails basics 44

Using Bundler 44

Why do we need the Bundler 44

Seng up Sodibee 45

Time for acon – start your engines 45

Seng up Mongoid 46

Time for acon – conguring Mongoid 47

Building the models 48

Time for acon – planning the object schema 48

Tesng from the Rails console 52

Time for acon – pung it all together 52

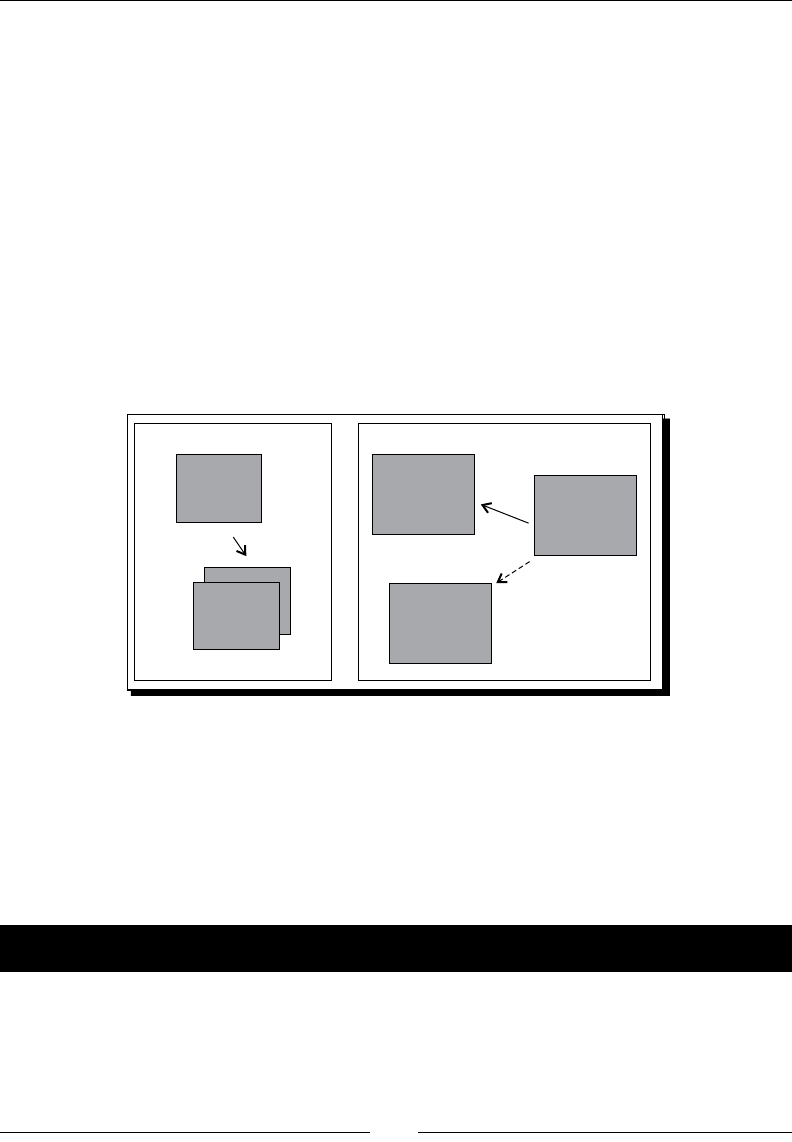

Understanding many-to-many relaonships in MongoDB 56

Using embedded documents 57

Time for acon – adding reviews to books 57

Choosing whether to embed or not to embed 58

Time for acon – embedding Lease and Purchase models 59

Working with Map/Reduce 60

Time for acon – wring the map funcon to calculate rangs 63

Time for acon – wring the reduce funcon to process the

emied results 64

Using Map/Reduce together 64

Time for acon – working with Map/Reduce using Ruby 65

Summary 68

Chapter 3: MongoDB Internals 69

Understanding Binary JSON 70

Fetching and traversing data 71

Manipulang data 71

Table of Contents

[ iii ]

What is ObjectId? 71

Documents and collecons 71

Capped collecons 72

Dates in MongoDB 72

JavaScript and MongoDB 72

Time for acon – wring our own custom funcons in MongoDB 73

Ensuring write consistency or "read your writes" 73

How does MongoDB use its memory-mapped storage engine? 74

Advantages of write-ahead journaling 74

Global write lock 74

Transaconal support in MongoDB 75

Understanding embedded documents and atomic updates 75

Implemenng opmisc locking in MongoDB 75

Time for acon – implemenng opmisc locking 76

Choosing between ACID transacons and MongoDB transacons 77

Why are there no joins in MongoDB? 77

Summary 79

Chapter 4: Working Out Your Way with Queries 81

Searching by elds in a document 81

Time for acon – searching by a string value 82

Querying for specic elds 84

Time for acon – fetching only for specic elds 84

Using skip and limit 86

Time for acon – skipping documents and liming our search results 86

Wring condional queries 87

Using the $or operator 88

Time for acon – nding books by name or publisher 88

Wring threshold queries with $gt, $lt, $ne, $lte, and $gte 88

Time for acon – nding the highly ranked books 89

Checking presence using $exists 89

Searching inside arrays 90

Time for acon – searching inside reviews 90

Searching inside arrays using $in and $nin 91

Searching for exact matches using $all 92

Searching inside hashes 92

Searching inside embedded documents 93

Searching with regular expressions 93

Time for acon – using regular expression searches 94

Summary 97

Table of Contents

[ iv ]

Chapter 5: Ruby DataMappers: Ruby and MongoDB Go Hand in Hand 99

Why do we need Ruby DataMappers 99

The mongo-ruby-driver 100

Time for acon – using mongo gem 101

The Ruby DataMappers for MongoDB 103

MongoMapper 104

Mongoid 104

Seng up DataMappers 104

Conguring MongoMapper 104

Time for acon – conguring MongoMapper 105

Conguring Mongoid 107

Time for acon – seng up Mongoid 107

Creang, updang, and destroying documents 110

Dening elds using MongoMapper 110

Dening elds using Mongoid 111

Creang objects 111

Time for acon – creang and updang objects 111

Using nder methods 112

Using nd method 112

Using the rst and last methods 113

Using the all method 113

Using MongoDB criteria 113

Execung condional queries using where 113

Time for acon – fetching using the where criterion 114

Revising limit, skip, and oset 115

Understanding model relaonships 116

The one to many relaon 116

Time for acon – relang models 116

Using MongoMapper 116

Using Mongoid 117

The many-to-many relaon 118

Time for acon – categorizing books 118

MongoMapper 118

Mongoid 119

Accessing many-to-many with MongoMapper 120

Accessing many-to-many relaons using Mongoid 120

The one-to-one relaon 121

Using MongoMapper 122

Using Mongoid 122

Time for acon – adding book details 123

Understanding polymorphic relaons 124

Implemenng polymorphic relaons the wrong way 124

Implemenng polymorphic relaons the correct way 124

Table of Contents

[ v ]

Time for acon – managing the driver enes 125

Time for acon – creang vehicles using basic polymorphism 129

Choosing SCI or basic polymorphism 132

Using embedded objects 133

Time for acon – creang embedded objects 134

Using MongoMapper 134

Using Mongoid 134

Using MongoMapper 137

Using Mongoid 137

Reverse embedded relaons in Mongoid 137

Time for acon – using embeds_one without specifying embedded_in 138

Time for acon – using embeds_many without specifying embedded_in 139

Understanding embedded polymorphism 140

Single Collecon Inheritance 141

Time for acon – adding licenses to drivers 141

Basic embedded polymorphism 142

Time for acon – insuring drivers 142

Choosing whether to embed or to associate documents 144

Mongoid or MongoMapper – the verdict 145

Summary 146

Chapter 6: Modeling Ruby with Mongoid 147

Developing a web applicaon with Mongoid 147

Seng up Rails 148

Time for acon – seng up a Rails project 148

Seng up Sinatra 149

Time for acon – using Sinatra professionally 151

Understanding Rack 156

Dening aributes in models 157

Accessing aributes 158

Indexing aributes 158

Unique indexes 159

Background indexing 159

Geospaal indexing 159

Sparse indexing 160

Dynamic elds 160

Time for acon – adding dynamic elds 160

Localizaon 162

Time for acon – localizing elds 162

Using arrays and hashes in models 164

Embedded objects 165

Table of Contents

[ vi ]

Dening relaons in models 165

Common opons for all relaons 165

:class_name opon 166

:inverse_of opon 166

:name opon 166

Relaon-specic opons 166

Opons for has_one 167

:as opon 167

:autosave opon 168

:dependent opon 168

:foreign_key opon 168

Opons for has_many 168

:order opon 168

Opons for belongs_to 169

:index opon 169

:polymorphic opon 169

Opons for has_and_belongs_to_many 169

:inverse_of opon 170

Time for acon – conguring the many-to-many relaon 171

Time for acon – seng up the following and followers relaonship 172

Opons for :embeds_one 175

:cascade_callbacks opon 175

:cyclic 175

Time for acon – seng up cyclic relaons 175

Opons for embeds_many 176

:versioned opon 176

Opons for embedded_in 176

:name opon 177

Managing changes in models 178

Time for acon – changing models 178

Mixing in Mongoid modules 179

The Paranoia module 180

Time for acon – geng paranoid 180

Versioning 182

Time for acon – including a version 182

Summary 185

Chapter 7: Achieving High Performance on Your Ruby Applicaon

with MongoDB 187

Proling MongoDB 188

Time for acon – enabling proling for MongoDB 188

Using the explain funcon 190

Time for acon – explaining a query 190

Using covered indexes 193

Table of Contents

[ vii ]

Time for acon – using covered indexes 193

Other MongoDB performance tuning techniques 196

Using mongostat 197

Understanding web applicaon performance 197

Web server response me 197

Throughput 198

Load the server using hperf 198

Monitoring server performance 199

End-user response and latency 202

Opmizing our code for performance 202

Indexing elds 202

Opmizing data selecon 203

Opmizing and tuning the web applicaon stack 203

Performance of the memory-mapped storage engine 203

Choosing the Ruby applicaon server 204

Passenger 204

Mongrel and Thin 204

Unicorn 204

Increasing performance of Mongoid using bson_ext gem 204

Caching objects 205

Memcache 205

Redis server 205

Summary 206

Chapter 8: Rack, Sinatra, Rails, and MongoDB – Making Use of them All 207

Revising Sodibee 208

The Rails way 208

Seng up the project 208

Modeling Sodibee 210

Time for acon – modeling the Author class 210

Time for acon – wring the Book, Category and Address models 211

Time for acon – modeling the Order class 212

Understanding Rails routes 213

What is the RESTful interface? 214

Time for acon – conguring routes 214

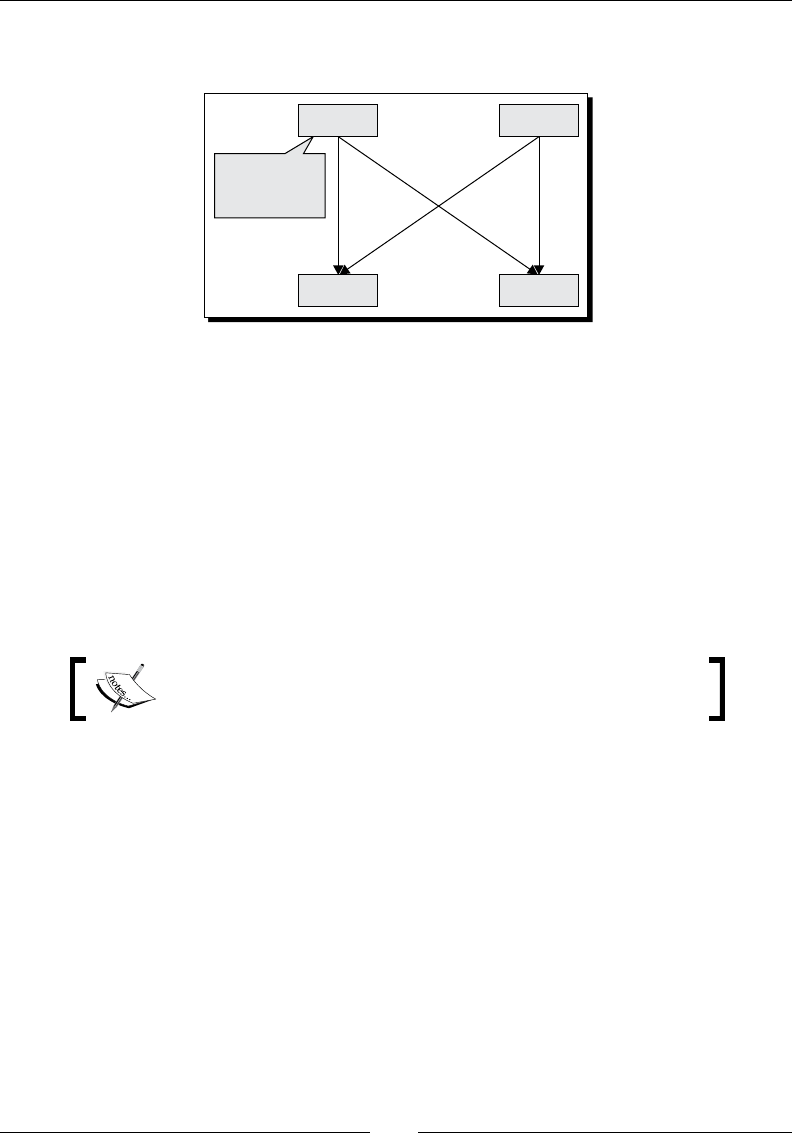

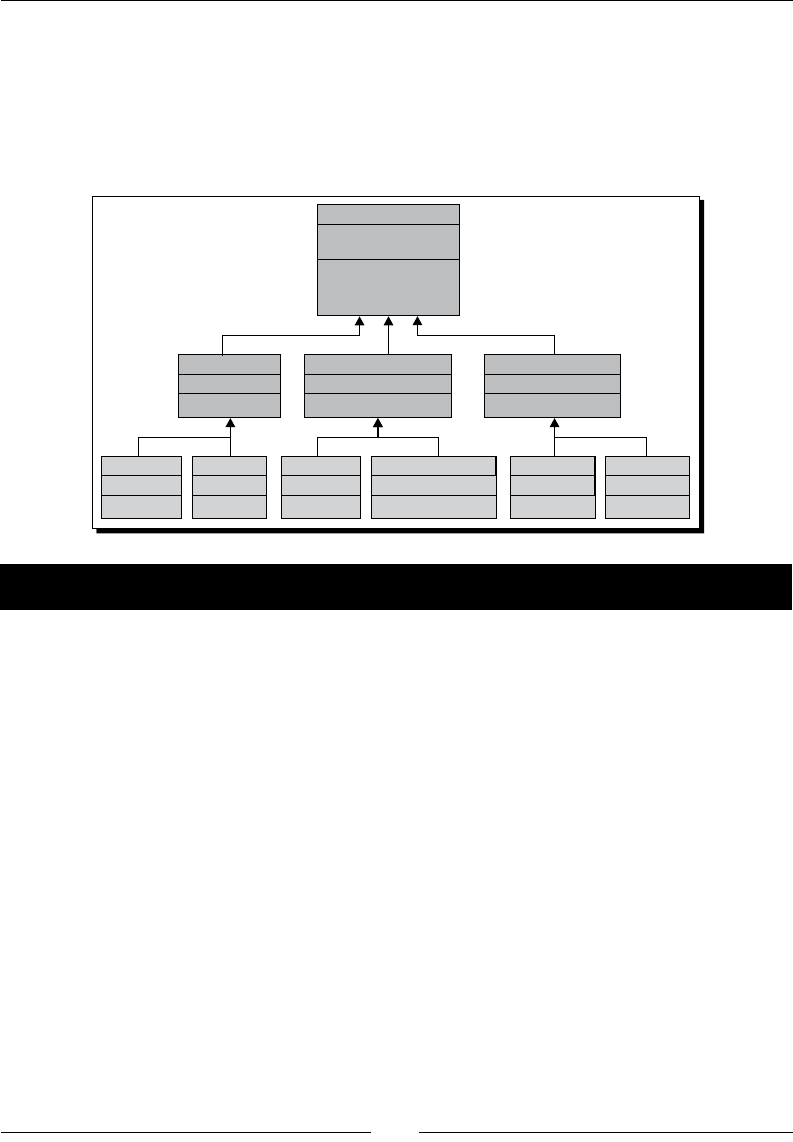

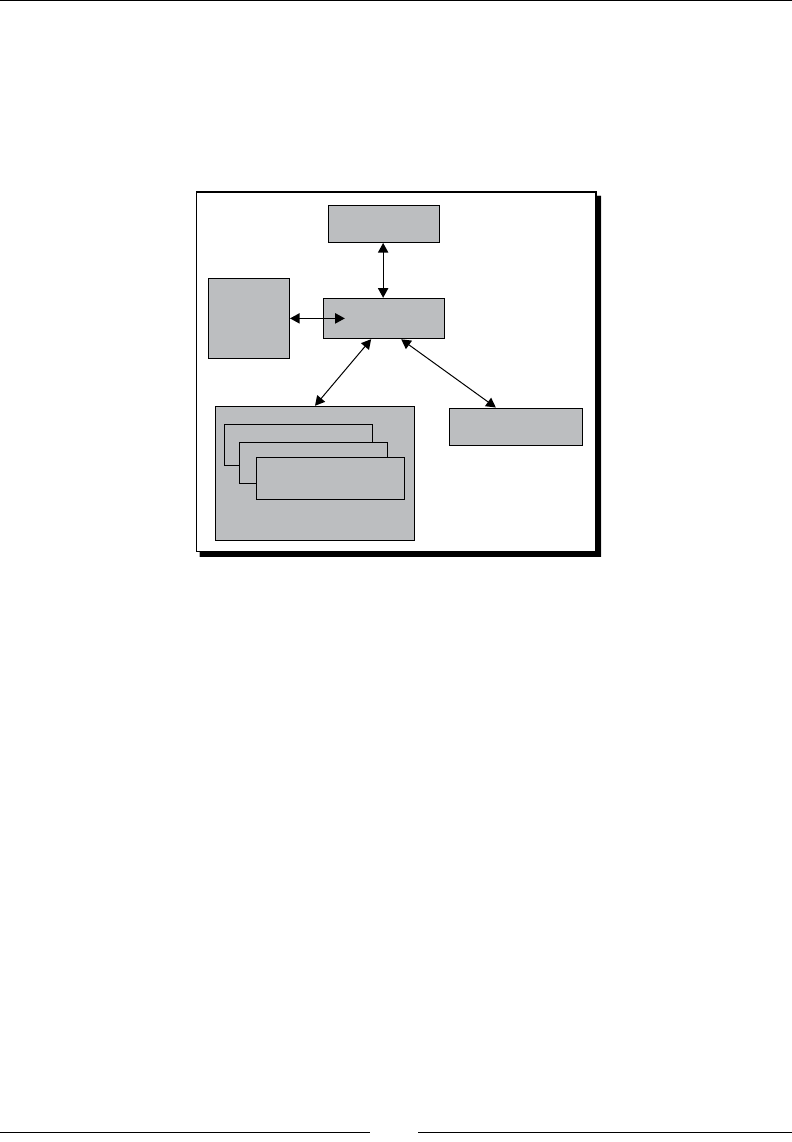

Understanding the Rails architecture 215

Processing a Rails request 216

Coding the Controllers and the Views 217

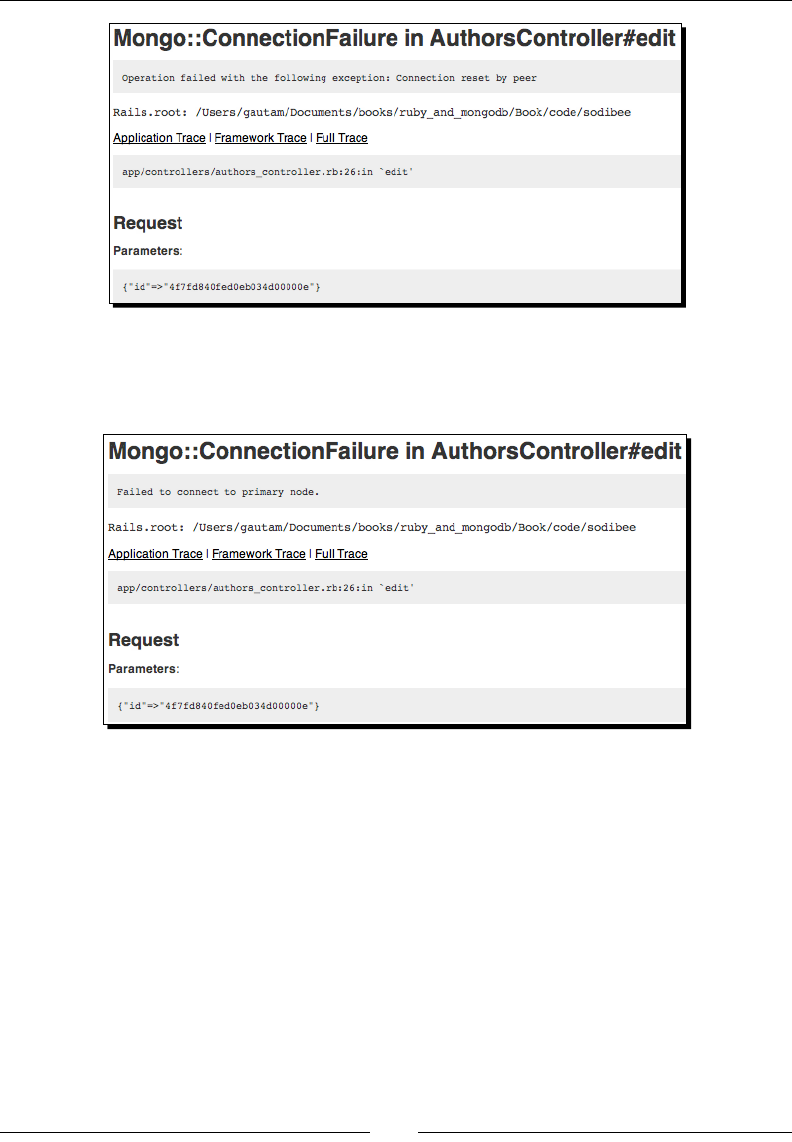

Time for acon – wring the AuthorsController 218

Solving the N+1 query problem using the includes method 219

Relang models without persisng them 220

Designing the web applicaon layout 223

Table of Contents

[ viii ]

Time for acon – designing the layout 223

Understanding the Rails asset pipeline 230

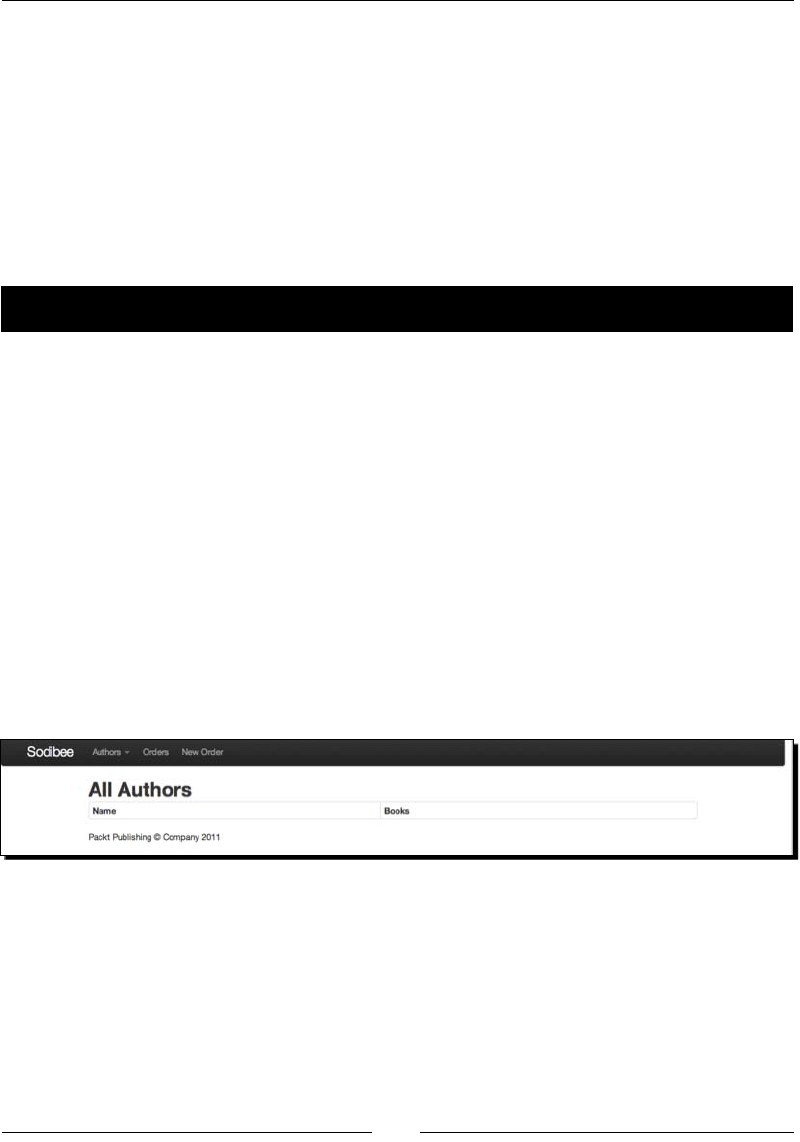

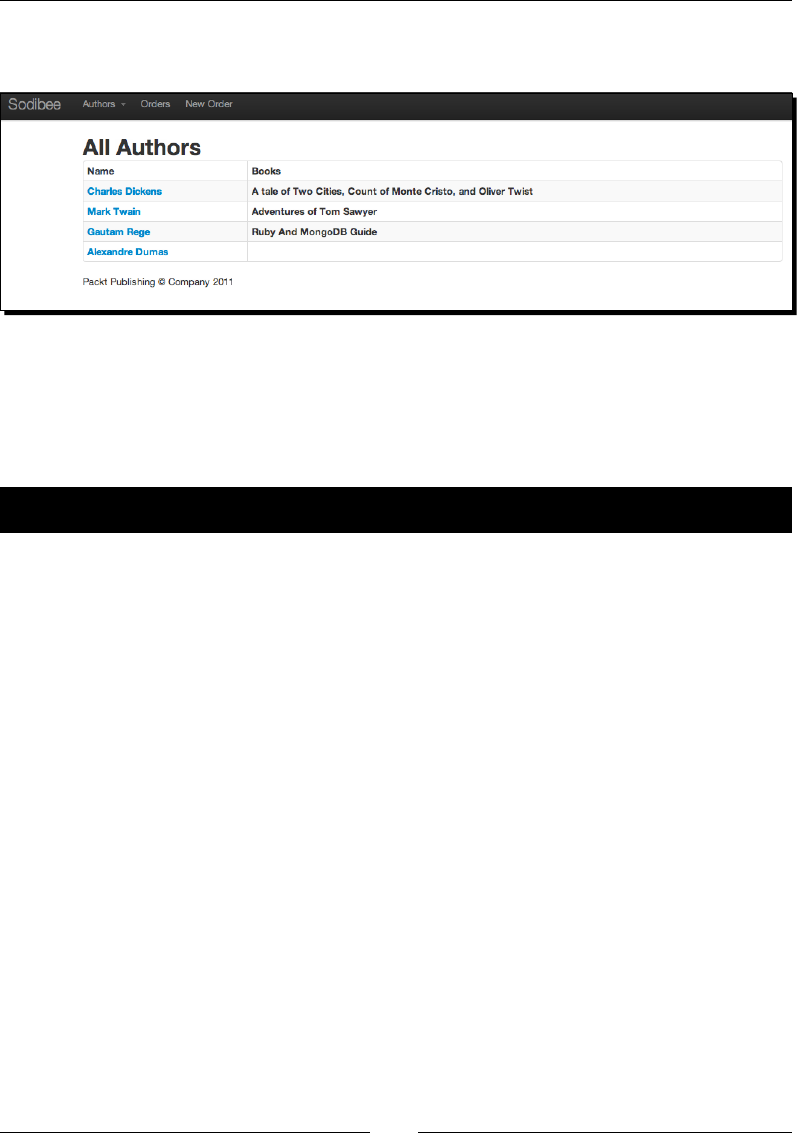

Designing the Authors lisng page 231

Time for acon – lisng authors 231

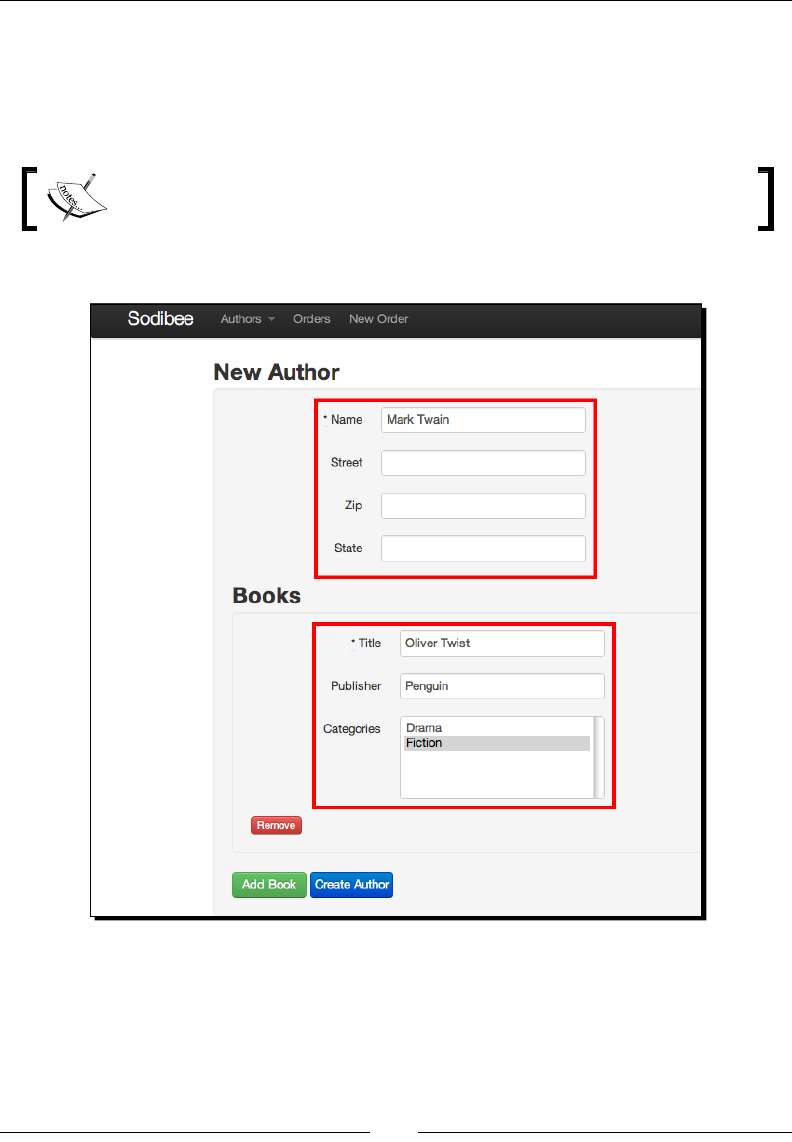

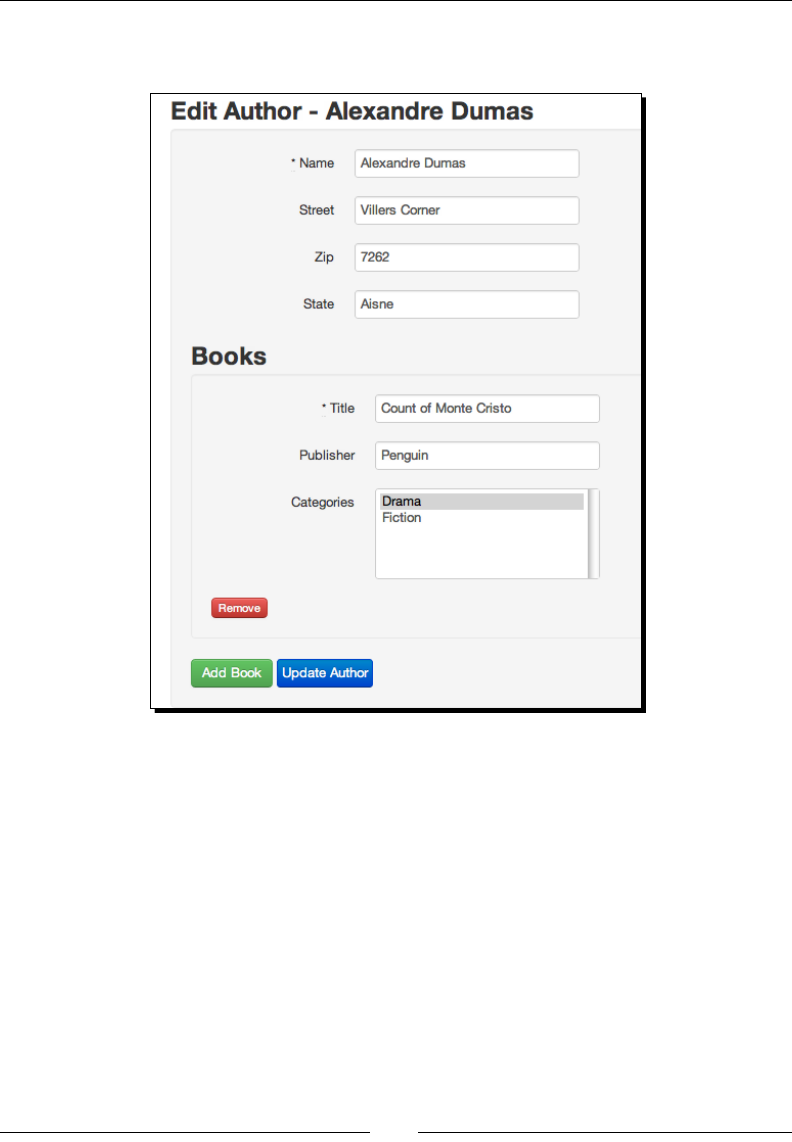

Adding new authors and their books 234

Time for acon – adding new authors and books 234

The Sinatra way 240

Time for acon – seng up Sinatra and Rack 240

Tesng and automaon using RSpec 243

Understanding RSpec 244

Time for acon – installing RSpec 244

Time for acon – sporking it 246

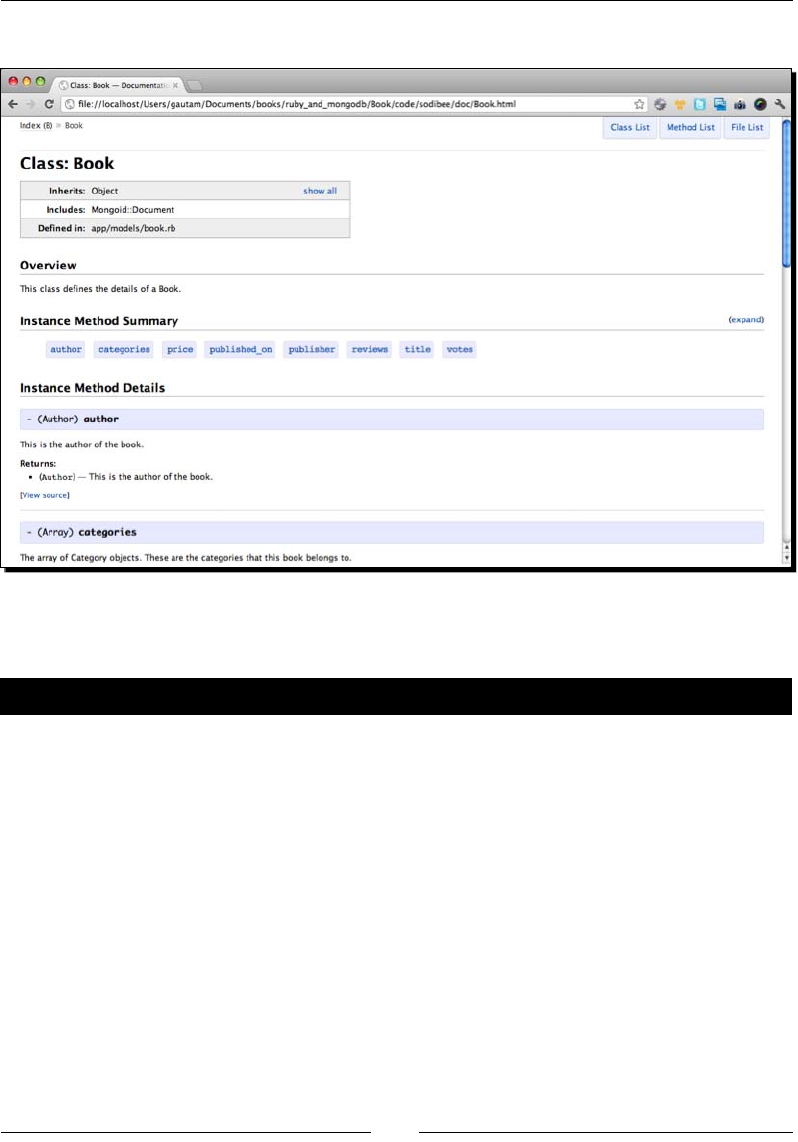

Documenng code using YARD 247

Summary 250

Chapter 9: Going Everywhere – Geospaal Indexing with MongoDB 251

What is geolocaon 252

How accurate is a geolocaon 253

Converng geolocaon to geocoded coordinates 253

Idenfying the exact geolocaon 254

Storing coordinates in MongoDB 255

Time for acon – geocoding the Address model 255

Tesng geolocaon storage 257

Time for acon – saving geolocaon coordinates 257

Using geocoder to update coordinates 258

Time for acon – using geocoder for storing coordinates 258

Firing geolocaon queries 260

Time for acon – nding nearby addresses 260

Using mongoid_spacial 262

Time for acon – ring near queries in Mongoid 262

Dierences between $near and $geoNear 263

Summary 264

Chapter 10: Scaling MongoDB 265

High availability and failover via replicaon 266

Implemenng the master/slave replicaon 266

Time for acon – seng up the master/slave replicaon 266

Using replica sets 271

Time for acon – implemenng replica sets 272

Recovering from crashes – failover 277

Adding members to the replica set 277

Implemenng replica sets for Sodibee 278

Table of Contents

[ ix ]

Time for acon – conguring replica sets for Sodibee 278

Implemenng sharding 283

Creang the shards 284

Time for acon – seng up the shards 284

Conguring the shards with a cong server 285

Time for acon – starng the cong server 285

Seng up the roung service – mongos 286

Time for acon – seng up mongos 286

Tesng shared replicaon 288

Implemenng Map/Reduce 289

Time for acon – planning the Map/Reduce funconality 290

Time for acon – Map/Reduce via the mongo console 291

Time for acon – Map/Reduce via Ruby 293

Performance benchmarking 295

Time for acon – iterang Ruby objects 295

Summary 298

Pop Quiz Answers 299

Index 301

Preface

And then there was light – a lightweight database! How oen have we all wanted some

database that was "just a data store"? Sure, you can use it in many complex ways but in

the end, it's just a plain simple data store. Welcome MongoDB!

And then there was light – a lightweight language that was fun to program in. It supports all

the constructs of a pure object-oriented language and is fun to program in. Welcome Ruby!

Both MongoDB and Ruby are the fruits of people who wanted to simplify things in a complex

world. Ruby, wrien by Yokihiro Matsumoto was made, picking the best constructs from Perl,

SmallTalk and Scheme. They say Matz (as he is called lovingly) "writes in C so that you don't

have to". Ruby is an object-oriented programming language that can be summarized in one

word: fun!

It's interesng to know that Ruby was created as an "object-oriented

scripng language". However, today Ruby can be compiled using JRuby

or Rubinius, so we could call it a programming language.

MongoDB has its roots from the word "humongous" and has the primary goal to manage

humongous data! As a NoSQL database, it relies heavily on data stored as key-value pairs.

Wait! Did we hear NoSQL – (also pronounced as No Sequel or No S-Q-L)? Yes! The roots of

MongoDB lie in its data not having a structured format! Even before we dive into Ruby and

MongoDB, it makes sense to understand some of these basic premises:

NoSQL

Brewer's CAP theorem

Basically Available, So-state, Eventually-consistent (BASE)

ACID or BASE

Preface

[ 2 ]

Understanding NoSQL

When the world was living in an age of SQL gurus and Database Administrators with

experse in stored procedures and triggers, a few brave men dared to rebel. The reason was

"simplicity". SQL was good to use when there was a structure and a xed set of rules. The

common databases such as Oracle, SQL Server, MySQL, DB2, and PostgreSQL, all promoted

SQL – referenal integrity, consistency, and atomic transacons. One of the SQL based rebels

- SQLite decided to be really "lite" and either ignored most of these constructs or did not

enforce them based on the premise: "Know what you are doing or beware".

Similarly, NoSQL is all about using simple keys to store data. Searching keys uses various

hashing algorithms, but at the end of the day all we have is a simple data store!

With the advent of web applicaons and crowd sourcing web portals, the mantra was

"more scalable than highly available" and "more speed instead of consistency". Some web

applicaons may be okay with these and others may not. What is important is that there is

now a choice and developers can choose wisely!

It's interesng to note that "key-value pair" databases have existed from the early 80's – the

earliest to my knowledge being Berkeley DB – blazingly fast, light-weight, and a very simple

library to use.

Brewer's CAP theorem

Brewer's CAP theorem states that any distributed computer system can support only any two

among consistency, atomicity, and paron tolerance.

Consistency deals with consistency of data or referenal integrity

Atomicity deals with transacons or a set of commands that execute as

"all or nothing"

Paron tolerance deals with distributed data, scaling and replicaon

There is sucient belief that any database can guarantee any two of the above. However, the

essence of the CAP theorem is not to nd a soluon to have all three behaviors, but to allow us

to look at designing databases dierently based on the applicaon we want to build!

For example, if you are building a Core Banking System (CBS), consistency and atomicity are

extremely important. The CBS must guarantee these two at the cost of paron tolerance.

Of course, a CBS has its failover systems, backup, and live replicaon to guarantee zero

downme, but at the cost of addional infrastructure and usually a single large instance

of the database.

Preface

[ 3 ]

A heavily accessed informaon web portal with a large amount of data requires speed

and scale, not consistency. Does the order of comments submied at the same me really

maer? What maers is how quickly and consistently the data was delivered. This is a clear

case of consistency and paron tolerance at the cost of atomicity.

An excellent arcle on the CAP theorem is at

http://www.julianbrowne.com/article/viewer/

brewers-cap-theorem.

What are BASE databases?

"Basically Available, So-state, Eventually-consistent"!!

Just the name suggests, a trade-o, BASE databases (yes, they are called BASE databases

intenonally to mock ACID databases) use some taccs to have consistency, atomicity, and

paron tolerance "eventually". They do not really defy the CAP theorem but work around it.

Simply put: I can aord my database to be consistent over me by synchronizing informaon

between dierent database nodes. I can cache data (also called "so-state") and persist it

later to increase the response me of my database. I can have a number of database nodes

with distributed data (paron tolerance) to be highly available and any loss of connecvity

to any nodes prompts other nodes to take over!

This does not mean that BASE databases are not prone to failure. It does imply however,

that they can recover quickly and consistently. They usually reside on standard commodity

hardware, thus making them aordable for most businesses!

A lot of databases on websites prefer speed, performance, and scalability instead of pure

consistency and integrity of data. However, as the next topic will cover, it is important to

know what to choose!

Using ACID or BASE?

"Atomic, Consistent, Isolated, and Durable" (ACID) is a cliched term used for transaconal

databases. ACID databases are sll very popular today but BASE databases are catching up.

ACID databases are good to use when you have heavy transacons at the core of your

business processes. But most applicaons can live without this complexity. This does not

imply that BASE databases do not support transacons, it's just that ACID databases are

beer suited for them.

Preface

[ 4 ]

Choose a database wisely – an old man said rightly! A choice of a database can decide the

future of your product. There are many databases today that we can choose from. Here are

some basic rules to help choose between databases for web applicaons:

A large number of small writes (vote up/down) – Redis

Auto-compleon, caching – Redis, memcached

Data mining, trending – MongoDB, Hadoop, and Big Table

Content based web portals – MongoDB, Cassandra, and Sharded ACID databases

Financial Portals – ACID database

Using Ruby

So, if you are now convinced (or rather interested to read on about MongoDB), you might

wonder where Ruby ts in anyway? Ruby is one of the languages that is being adopted the

fastest among all the new-age object oriented languages. But the big dierenator is that

it is a language that can be used, tweaked, and cranked in any way that you want – from

wring sweet smelling code to wring a domain-specic language (DSL)!

Ruby metaprogramming lets us easily adapt to any new technology, frameworks, API, and

libraries. In fact, most new services today always bundle a Ruby gem for easy integraon.

There are many Ruby implementaons available today (somemes called Rubies) such as,

the original MRI, JRuby, Rubinius, MacRuby, MagLev, and the Ruby Enterprise Edion. Each

of them has a slightly dierent avors, much like the dierent avors of Linux.

I oen have to "sell" Ruby to nontechnical or technically biased people. This simple

experiment never fails:

When I code in Ruby, I can guarantee, "My grandmother can read my code". Can any other

language guarantee that? The following is a simple code in C:

/* A simple snippet of code in C */

for (i = 0; i < 10; i++) {

printf("Hi");

}

And now the same code in Ruby:

# The same snippet of code in Ruby

10.times do

print "hi"

end

Preface

[ 5 ]

There is no way that the Ruby code can be misinterpreted. Yes, I am not saying that you

cannot write complex and complicated code in Ruby, but most code is simple to read and

understand. Frameworks, such as Rails and Sinatra, use this feature to ensure that the code

we see is readable! There is a lot of code under the cover which enables this though. For

example, take a look at the following Ruby code:

# library.rb

class Library

has_many :books

end

# book.rb

class Book

belongs_to :library

end

It's quite understandable that "A library has many books" and that "A book belongs to

a library".

The really fun part of working in Ruby (and Rails) is the nesse in the language. For example,

in the small Rails code snippet we just saw, books is plural and library is singular. The

framework infers the model Book model by the symbol :books and infers the Library

model from the symbol :library – it goes the distance to make code readable.

As a language, Ruby is free owing with relaxed rules – you can dene a method call true in

your calls that could return false! Ruby is a language where you do whatever you want as

long as you know its impact. It's a human language and you can do the same thing in many

dierent ways! There is no right or wrong way; there is only a more ecient way. Here is a

simple example to demonstrate the power of Ruby! How do you calculate the sum of all the

numbers in the array [1, 2, 3, 4, 5]?

The non-Ruby way of doing this in Ruby is:

sum = 0

for element in [1, 2, 3, 4, 5] do

sum += element

end

The not-so-much-fun way of doing this in Ruby could be:

sum = 0

[1, 2, 3, 4, 5].each do |element|

sum += element

end

Preface

[ 6 ]

The normal-fun way of doing this in Ruby is:

[1, 2, 3, 4, 5].inject(0) { |sum, element| sum + element }

Finally, the kick-ass way of doing this in Ruby is either one of the following:

[1, 2, 3, 4, 5].inject(&:+)

[1, 2, 3, 4, 5].reduce(:+)

There you have it! So many dierent ways of doing the same thing in Ruby – but noce how

most Ruby code gets done in one line.

Enjoy Ruby!

What this book covers

Chapter 1, Installing MongoDB and Ruby, describes how to install MongoDB on Linux and

Mac OS. We shall learn about the various MongoDB ulies and their usage. We then install

Ruby using RVM and also get a brief introducon to rbenv.

Chapter 2, Diving Deep into MongoDB, explains the various concepts of MongoDB and how it

diers from relaonal databases. We learn various techniques, such as inserng and updang

documents and searching for documents. We even get a brief introducon to Map/Reduce.

Chapter 3, MongoDB Internals, shares some details about what BSON is, usage of JavaScript,

the global write lock, and why there are no joins or transacons supported in MongoDB. If

you are a person in the fast lane, you can skip this chapter.

Chapter 4, Working Out Your Way with Queries, explains how we can query MongoDB

documents and search inside dierent data types such as arrays, hashes, and embedded

documents. We learn about the various query opons and even regular expression

based searching.

Chapter 5, Ruby DataMappers: Ruby and MongoDB Go Hand in Hand, provides details

on how to use Ruby data mappers to query MongoDB. This is our rst introducon to

MongoMapper and Mongoid. We learn how to congure both of them, query using

these data mappers, and even see some basic comparison between them.

Chapter 6, Modeling Ruby with Mongoid, introduces us to data models, Rails, Sinatra, and how

we can model data using MongoDB data mappers. This is the core of the web applicaon and

we see various ways to model data, organize our code, and query using Mongoid.

Preface

[ 7 ]

Chapter 7, Achieving High Performance on Your Ruby Applicaon with MongoDB,

explains the importance of proling and ensuring beer performance right from the

start of developing web applicaons using Ruby and MongoDB. We learn some best

pracces and concepts concerning the performance of web applicaons, tools, and

methods which monitor the performance of our web applicaon.

Chapter 8, Rack, Sinatra, Rails, and MongoDB – Making Use of them All, describes in

detail how to build the full web applicaon in Rails and Sinatra using Mongoid. We

design the logical ow, the views, and even learn how to test our code and document it.

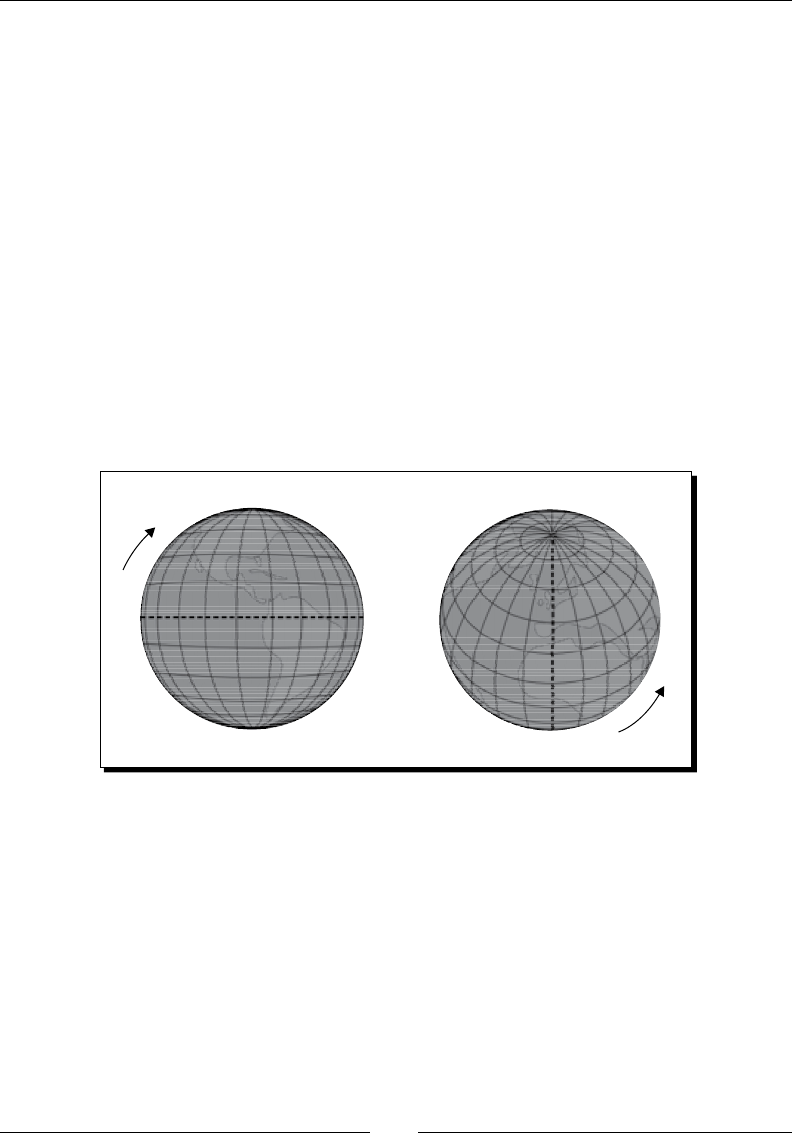

Chapter 9, Going Everywhere – Geospaal Indexing with MongoDB, helps us understand

geolocaon concepts. We learn how to set up geospaal indexes, get introduced to

geocoding, and learn about geolocaon spherical queries.

Chapter 10, Scaling MongoDB, provides details on how we scale MongoDB using replica

sets. We learn about sharding, replicaon, and how we can improve performance using

MongoDB map/reduce.

Appendix, Pop Quiz Answers, provides answers to the quizzes present at the end of chapters.

What you need for this book

This book would require the following:

MongoDB version 2.0.2 or latest

Ruby version 1.9 or latest

RVM (for Linux and Mac OS only)

DevKit (for Windows only)

MongoMapper

Mongoid

And other gems, of which I will inform you as we need them!

Who this book is for

This book assumes that you are experienced in Ruby and web development skills - HTML,

and CSS. Having knowledge of using NoSQL will help you get through the concepts quicker,

but it is not mandatory. No prior knowledge of MongoDB required.

Preface

[ 8 ]

Conventions

In this book, you will nd several headings appearing frequently.

To give clear instrucons of how to complete a procedure or task, we use:

Time for action – heading

1. Acon 1

2. Acon 2

3. Acon 3

Instrucons oen need some extra explanaon so that they make sense, so they are

followed with:

What just happened?

This heading explains the working of tasks or instrucons that you have just completed.

You will also nd some other learning aids in the book, including:

Pop quiz – heading

These are short mulple choice quesons intended to help you test your own understanding.

Have a go hero – heading

These set praccal challenges and give you ideas for experimenng with what you have learned.

You will also nd a number of styles of text that disnguish between dierent kinds of

informaon. Here are some examples of these styles, and an explanaon of their meaning.

Code words in text are shown as follows: "We can include other contexts through the use of

the include direcve."

A block of code is set as follows:

book = {

name: "Oliver Twist",

author: "Charles Dickens",

publisher: "Dover Publications",

published_on: "December 30, 2002",

category: ['Classics', 'Drama']

}

Preface

[ 9 ]

When we wish to draw your aenon to a parcular part of a code block, the relevant lines

or items are set in bold:

function(key, values) {

var result = {votes: 0}

values.forEach(function(value) {

result.votes += value.votes;

});

return result;

}

Any command-line input or output is wrien as follows:

$ curl -L get.rvm.io | bash -s stable

New terms and important words are shown in bold. Words that you see on the screen, in

menus or dialog boxes for example, appear in the text like this: "clicking the Next buon

moves you to the next screen".

Warnings or important notes appear in a box like this.

Tips and tricks appear like this.

Reader feedback

Feedback from our readers is always welcome. Let us know what you think about this

book—what you liked or may have disliked. Reader feedback is important for us to

develop tles that you really get the most out of.

To send us general feedback, simply send an e-mail to feedback@packtpub.com, and

menon the book tle through the subject of your message.

If there is a topic that you have experse in and you are interested in either wring or

contribung to a book, see our author guide on www.packtpub.com/authors.

Preface

[ 10 ]

Customer support

Now that you are the proud owner of a Packt book, we have a number of things to help

you to get the most from your purchase.

Downloading the example code

You can download the example code les for all Packt books you have purchased from

your account at http://www.packtpub.com. If you purchased this book elsewhere,

you can visit http://www.packtpub.com/support and register to have the les

e-mailed directly to you.

Errata

Although we have taken every care to ensure the accuracy of our content, mistakes do

happen. If you nd a mistake in one of our books—maybe a mistake in the text or the

code—we would be grateful if you would report this to us. By doing so, you can save

other readers from frustraon and help us improve subsequent versions of this book.

If you nd any errata, please report them by vising http://www.packtpub.com/

support, selecng your book, clicking on the errata submission form link, and entering

the details of your errata. Once your errata are veried, your submission will be accepted

and the errata will be uploaded to our website, or added to any list of exisng errata,

under the Errata secon of that tle.

Piracy

Piracy of copyright material on the Internet is an ongoing problem across all media. At

Packt, we take the protecon of our copyright and licenses very seriously. If you come

across any illegal copies of our works, in any form, on the Internet, please provide us

with the locaon address or website name immediately so that we can pursue a remedy.

Please contact us at copyright@packtpub.com with a link to the suspected

pirated material.

We appreciate your help in protecng our authors, and our ability to bring you

valuable content.

Questions

You can contact us at questions@packtpub.com if you are having a problem with

any aspect of the book, and we will do our best to address it.

1

Installing MongoDB and Ruby

MongoDB and Ruby have both been created as a result of technology geng

complicated. They both try to keep it simple and manage all the complicated

tasks at the same me. MongoDB manages "humongous" data and Ruby

is fun. Working together, they form a great bond that gives us what most

programmers desire—a fun way to build large applicaons!

Now that your interest has increased, we should rst set up our system. In this chapter,

we will see how to do the following:

Install Ruby using RVM

Install MongoDB

Congure MongoDB

Set up the inial playground using MongoDB tools

But rst, what are the basic system requirements for installing Ruby and MongoDB? Do we

need a heavy-duty server? Nah! On the contrary, any standard workstaon or laptop will be

enough. Ensure that you have at least 1 GB memory and more than 32 GB disk space.

Did you say operang system? Ruby and MongoDB are both cross-plaorm compliant. This

means they can work on any avor of Linux (such as Ubuntu, Red Hat, Fedora, Gentoo, and

SuSE), Mac OS (such as Leopard, Snow Leopard, and Lion) or Windows (such as XP, 2000,

and 7).

Installing MongoDB and Ruby

[ 12 ]

If you are planning on using Ruby and MongoDB professionally, my personal

recommendaons for development are Mac OS or Linux. As we want to see detailed

instrucons, I am going to use examples for Ubuntu or Mac OS (and point out addional

instrucons for Windows whenever I can). While hosng MongoDB databases, I would

personally recommend using Linux.

It's true that Ruby is cross-plaorm, most Rubyists tend to

shy away from Windows as it's not always awless. There are

eorts underway to recfy this.

Let the games begin!

Installing Ruby

I recommend using RVM (Ruby Version Manager) for installing Ruby. The detailed

instrucons are available at http://beginrescueend.com/rvm/install/.

Incidentally, RVM was called Ruby Version Manager but its

name was changed to reect how much more it does today!

Using RVM on Linux or Mac OS

On Linux or Mac OS you can run this inial command to install RVM as follows:

$ curl -L get.rvm.io | bash -s stable

$ source ~/.rvm/scripts/'rvm'

Aer this has been successfully run, you can verify it yourself.

$ rvm list known

If you have successfully installed RVM, this should show you the enre list of Rubies

available. You will noce that there are quite a few implementaons of Ruby (MRI Ruby,

JRuby, Rubinius, REE, and so on) We are going to install MRI Ruby.

MRI Ruby is the "standard" or original Ruby implementaon.

It's called Matz Ruby Interpreter.

Chapter 1

[ 13 ]

The following is what you will see if you have successfully executed the previous command:

$ rvm list known

# MRI Rubies

[ruby-]1.8.6[-p420]

[ruby-]1.8.6-head

[ruby-]1.8.7[-p352]

[ruby-]1.8.7-head

[ruby-]1.9.1-p378

[ruby-]1.9.1[-p431]

[ruby-]1.9.1-head

[ruby-]1.9.2-p180

[ruby-]1.9.2[-p290]

[ruby-]1.9.2-head

[ruby-]1.9.3-preview1

[ruby-]1.9.3-rc1

[ruby-]1.9.3[-p0]

[ruby-]1.9.3-head

ruby-head

# GoRuby

goruby

# JRuby

jruby-1.2.0

jruby-1.3.1

jruby-1.4.0

jruby-1.6.1

jruby-1.6.2

jruby-1.6.3

jruby-1.6.4

jruby[-1.6.5]

jruby-head

Installing MongoDB and Ruby

[ 14 ]

# Rubinius

rbx-1.0.1

rbx-1.1.1

rbx-1.2.3

rbx-1.2.4

rbx[-head]

rbx-2.0.0pre

# Ruby Enterprise Edition

ree-1.8.6

ree[-1.8.7][-2011.03]

ree-1.8.6-head

ree-1.8.7-head

# Kiji

kiji

# MagLev

maglev[-26852]

maglev-head

# Mac OS X Snow Leopard Only

macruby[-0.10]

macruby-nightly

macruby-head

# IronRuby -- Not implemented yet.

ironruby-0.9.3

ironruby-1.0-rc2

ironruby-head

Isn't that beauful? So many Rubies and counng!

Chapter 1

[ 15 ]

Fun fact

Ruby is probably the only language that has a plural notaon!

When we work with mulple versions of Ruby, we collecvely

refer to them as "Rubies"!

Before we actually install any Rubies, we should congure the RVM packages that are

necessary for all the Rubies. These are the standard packages that Ruby can integrate with,

and we install them as follows:

$ rvm package install readline

$ rvm package install iconv

$ rvm package install zlib

$ rvm package install openssl

The preceding commands install some useful libraries for all the Rubies that we will

install. These libraries make it easier to work with the command line, internaonalizaon,

compression, and SSL. You can install these packages even aer Ruby installaon, but it's just

easier to install them rst.

$ rvm install 1.9.3

The preceding command will install Ruby 1.9.3 for us. However, while installing Ruby, we

also want to pre-congure it with the packages that we have installed. So, here is how we do

it, using the following commands:

$ export rvm_path=~/.rvm

$ rvm install 1.9.3 --with-readline-dir=$rvm_path/usr --with-iconv-

dir=$rvm_path/usr --with-zlib-dir=$rvm_path/usr --with-openssl-dir=$rvm_

path/usr

The preceding commands will miraculously install Ruby 1.9.3 congured with the packages

we have installed. We should see something similar to the following on our screen:

$ rvm install 1.9.3

Installing Ruby from source to: /Users/user/.rvm/rubies/ruby-1.9.3-p0,

this may take a while depending on your cpu(s)...

Installing MongoDB and Ruby

[ 16 ]

ruby-1.9.3-p0 - #fetching

ruby-1.9.3-p0 - #downloading

ruby-1.9.3-p0, this may take a while depending on your connection...

...

ruby-1.9.3-p0 - #extracting

ruby-1.9.3-p0 to /Users/user/.rvm/src/ruby-1.9.3-p0

ruby-1.9.3-p0 - #extracted to /Users/user/.rvm/src/ruby-1.9.3-p0

ruby-1.9.3-p0 - #configuring

ruby-1.9.3-p0 - #compiling

ruby-1.9.3-p0 - #installing

...

Install of ruby-1.9.3-p0 - #complete

Of course, whenever we start our machine, we do want to load RVM, so do add this line in

your startup prole script:

$ echo '[[ -s "$HOME/.rvm/scripts/rvm" ]] && . "$HOME/.rvm/scripts/rvm" #

Load RVM function' >> ~/.bash_profile

This will ensure that Ruby is loaded when you log in.

$ rvm requirements is a command that can assist you on

custom packages to be installed. This gives instrucons based

on the operang system you are on!

The RVM games

Conguring RVM for a project can be done as follows:

$ rvm –create –rvmrc use 1.9.3%myproject

The previous command allows us to congure a gemset for our project. So, when we move

to this project, it has a .rvmrc le that gets loaded and voila — our very own

custom workspace!

Chapter 1

[ 17 ]

A gemset, as the name suggests, is a group of gems that are loaded for a parcular version

of Ruby or a project. As we can have mulple versions of the same gem on a machine, we

can congure a gemset for a parcular version of Ruby and for a parcular version of the

gem as well!

$ cd /path/to/myproject

Using ruby 1.9.2 p180 with gemset myproject

In case you need to install something via RVM with sudo

access, remember to use rvmsudo instead of sudo!

The Windows saga

RVM does not work on Windows, instead you can use pik. All the detailed instrucons

to install Ruby are available at http://rubyinstaller.org/. It is prey simple and

a one-click installer.

Do remember to install DevKit as it is required for compiling

nave gems.

Using rbenv for installing Ruby

Just like all good things, RVM becomes quite complex because the community started

contribung heavily to it. Some people wanted just a Ruby version manager, so rbenv was

born. Both are quite popular but there are quite a few dierences between rbenv and RVM.

For starters, rbenv does not need to be loaded into the shell and does not override any shell

commands. It's very lightweight and unobtrusive. Install it by cloning the repository into your

home directory as .rbenv. It is done as follows:

$ cd

$ git clone git://github.com/sstephenson/rbenv.git .rbenv

Add the preceding command to the system path, that is, the $PATH variable and you're

all set.

rbenv works on a very simple concept of shims. Shims are scripts that understand what

version of Ruby we are interested in. All the versions of Ruby should be kept in the $HOME/.

rbenv/versions directory. Depending on which Ruby version is being used, the shim

inserts that parcular path at the start of the $PATH variable. This way, that Ruby version

is picked up!

Installing MongoDB and Ruby

[ 18 ]

This enables us to compile the Ruby source code too (unlike RVM where we have to specify

ruby-head).

For more informaon on rbenv, see https://github.com/

sstephenson/rbenv.

Installing MongoDB

MongoDB installers are a bunch of binaries and libraries packaged in an archive. All you

need to do is download and extract the archive. Could this be any simpler?

On Mac OS, you have two popular package managers Homebrew and MacPorts. If you

are using Homebrew, just issue the following command:

$ brew install MongoDB

If you don't have brew installed, it is strongly recommended to install it. But don't fret.

Here is the manual way to install MongoDB on any Linux, Mac OS, or Windows machine:

1. Download MongoDB from http://www.mongodb.org/downloads.

2. Extract the .tgz le to a folder (preferably which is in your system path).

It's done!

On any Linux Shell, you can issue the following commands to download and install. Be sure

to append the /path/to/MongoDB/bin to your $PATH variable:

$ cd /usr/local/

$ curl http://fastdl.mongodb.org/linux/mongodb-linux-i686-2.0.2.tgz >

mongo.tgz

$ tar xf mongo.tgz

$ ln –s mongodb-linux-i686-2.0.2 MongoDB

For Windows, you can simply download the ZIP le and extract it in a folder. Ensure that

you update the </path/to/MongoDB/bin> in your system path.

MongoDB v1.6, v1.8, and v2.x are considerably dierent. Be

sure to install the latest version. Over the course of wring this

book, v2.0 was released and the latest version is v2.0.2. It is

that version that this book will reference.

Chapter 1

[ 19 ]

Conguring the MongoDB server

Before we start the MongoDB server, it's necessary to congure the path where we want to

store our data, the interface to listen on, and so on. All these conguraons are stored in

mongod.conf. The default mongod.conf looks like the following code and is stored at the

same locaon where MongoDB is installed—in our case /usr/local/mongodb:

# Store data in /usr/local/var/mongodb instead of the default /data/db

dbpath = /usr/local/var/mongodb

# Only accept local connections

bind_ip = 127.0.0.1

dbpath is the locaon where the data will be stored. Tradionally, this used to be /data/db

but this has changed to /usr/local/var/mongodb. MongoDB will create this dbpath if

you have not created it already.

bind_ip is the interface on which the server will run. Don't mess with this entry unless

you know what you are doing!

Write-ahead logging is a technique to ensure durability and

atomicity in database systems. Before actually wring to the

database, the informaon (such as redo and undo) is wrien to a

log (called the journal). This ensures that recovering from a crash

is credible and fast. We shall learn more about this in the book.

Starting MongoDB

We can start the MongoDB server using the following command:

$ sudo mongod --config /usr/local/mongodb/mongod.conf

Remember that if we don't give the --config parameter, the default dbpath will be

taken as /data/db.

When you start the server, if all is well, you should see something like the following:

$ sudo mongod --config /usr/local/mongodb/mongod.conf

Sat Sep 10 15:46:31 [initandlisten] MongoDB starting : pid=14914

port=27017 dbpath=/usr/local/var/mongodb 64-bit

Installing MongoDB and Ruby

[ 20 ]

Sat Sep 10 15:46:31 [initandlisten] db version v2.0.2, pdfile version 4.5

Sat Sep 10 15:46:31 [initandlisten] git version:

c206d77e94bc3b65c76681df5a6b605f68a2de05

Sat Sep 10 15:46:31 [initandlisten] build sys info: Darwin erh2.10gen.

cc 9.6.0 Darwin Kernel Version 9.6.0: Mon Nov 24 17:37:00 PST 2008;

root:xnu-1228.9.59~1/RELEASE_I386 i386 BOOST_LIB_VERSION=1_40

Sat Sep 10 15:46:31 [initandlisten] journal dir=/usr/local/var/mongodb/

journal

Sat Sep 10 15:46:31 [initandlisten] recover : no journal files present,

no recovery needed

Sat Sep 10 15:46:31 [initandlisten] waiting for connections on port 27017

Sat Sep 10 15:46:31 [websvr] web admin interface listening on port 28017

The preceding process does not terminate as it is running in the foreground! Some

explanaons are due here:

The server started with pid 14914 on port 27017 (default port)

The MongoDB version is 2.0.2

The journal path is /usr/local/var/mongodb/journal (It also menons that

there is no current journal le, as this is the rst me we are starng this up!)

The web admin port is on 28017

The MongoDB server has some prey interesng command-line

opons:–v is verbose. –vv is more verbose and –vvv is even

more verbose. Include mulple mes for more verbosity!

There are plenty of command line opons that allow us to use MongoDB in various ways.

For example:

1. --jsonp allows JSONP access.

2. --rest turns on REST API.

3. Master/Slave, opons, replicaon opons, and even sharing opons

(We shall see more in Chapter 10, Scaling MongoDB).

Chapter 1

[ 21 ]

Stopping MongoDB

Press Ctrl+C if the process is running in the foreground. If it's running as a daemon, it has

its standard startup script. On Linux avors such as Ubuntu, you have upstart scripts that

start and stop the mongod daemon. On Mac, you have launchd and launchct commands

that can start and stop the daemon. On other avors of Linux, you would nd more of the

resource scripts in the /etc/init.d directory. On Windows, the Services in the Control

Panel can control the daemon process.

The MongoDB CLI

Along with the MongoDB server binary, there are plenty of other ulies too that help us in

administraon, monitoring, and management of MongoDB.

Understanding JavaScript Object Notation (JSON)

Even before we see how to use MongoDB ulies, it's important to know how informaon is

stored. We shall study a lot more of the object model in Chapter 2, Diving Deep into MongoDB.

What is a JavaScript object? Surely you've heard of JavaScript Object Notaon (JSON).

MongoDB stores informaon similar to this. (It's called Binary JSON (BSON), which we shall

read more about in Chapter 3, The MongoDB Internals). BSON, in addion to JSON formats,

is ideally suited for "Document" storage. Don't worry, more informaon on this later!

So, if you want to save informaon, you simply use the JSON protocol:

{

name : 'Gautam Rege',

passion: [ 'Ruby', 'MongoDB' ],

company : {

name : "Josh Software Private Limited",

country : 'India'

}

}

The previous example shows us how to store informaon:

String: "" or ''

Integer: 10

Float: 10.1

Array: ['1', 2]

Hash: {a: 1, b: 2}

Installing MongoDB and Ruby

[ 22 ]

Connecting to MongoDB using Mongo

The Mongo client ulity is used to connect to MongoDB database. Considering that this

is a Ruby and MongoDB book, it is a ulity that we shall use rarely (because we shall be

accessing the database using Ruby). The Mongo CLI client, however, is indeed useful for

tesng out basics.

We can connect to MongoDB databases in various ways:

$ mongo book

$ mongo 192.168.1.100/book

$ mongo db.myserver.com/book

$ mongo 192.168.1.100:9999/book

In the preceding case, we connect to a database called book on localhost, on a remote

server, or on a remote server on a dierent port. When you connect to a database, you

should see the following:

$ mongo book

MongoDB shell version: 2.0.2

connecting to: book

>

Saving information

To save data, use the JavaScript object and execute the following command:

> db.shelf.save( { name: 'Gautam Rege',

passion : [ 'Ruby', 'MongoDB']

})

>

The previous command saves the data (that is, usually called "Document") into the collecon

shelf. We shall talk more about collecons and other terminologies in Chapter 3, MongoDB

Internals. A collecon can vaguely be compared to tables.

Chapter 1

[ 23 ]

Retrieving information

We have various ways to retrieve the previously stored informaon:

Fetch the rst 10 objects from the book database (also called a collecon),

as follows:

> db.shelf.find()

{ "_id" : ObjectId("4e6bb98a26e77d64db8a3e89"), "name" : "Gautam

Rege", "passion" : [ "Ruby", MongoDB" ] }

>

Find a specic record of the name aribute. This is achieved by execung the

following command:

> db.shelf.find( { name : 'Gautam Rege' })

{ "_id" : ObjectId("4e6bb98a26e77d64db8a3e89"), "name" : "Gautam

Rege", "passion" : [ "Ruby", MongoDB" ] }

>

So far so good! But you may be wondering what the big deal is. This is similar to a select

query I would have red anyway. Well, here is where things start geng interesng.

Find records by using regular expressions! This is achieved by execung the

following command:

$ db.shelf.find( { name : /Rege/ })

{ "_id" : ObjectId("4e6bb98a26e77d64db8a3e89"), "name" : "Gautam

Rege", "passion" : [ "Ruby", MongoDB" ] }

>

Find records by using regular expressions using the case-insensive ag! This is

achieved by execung the following command:

$ db.shelf.find( { name : /rege/i })

{ "_id" : ObjectId("4e6bb98a26e77d64db8a3e89"), "name" : "Gautam

Rege", "passion" : [ "Ruby", MongoDB" ] }

>

As we can see, it's easy when we have programming constructs mixed with database

constructs with a dash of regular expressions.

Installing MongoDB and Ruby

[ 24 ]

Deleting information

No surprises here!

To remove all the data from book, execute the following command:

> db.shelf.remove()

>

To remove specic data from book, execute the following command:

> db.shelf.remove({name : 'Gautam Rege'})

>

Exporting information using mongoexport

Ever wondered how to extract informaon from MongoDB? It's mongoexport! What is

prey cool is that the Mongo data transfer protocol is all in JSON/BSON formats. So what?

- you ask. As JSON is now a universally accepted and common format of data transfer,

you can actually export the database, or the collecon, directly in JSON format — so even

your web browser can process data from MongoDB. No more three-er applicaons! The

opportunies are innite!

Ok, back to basics. Here is how you can export data from MongoDB:

$ mongoexport –d book –c shelf

connected to: 127.0.0.1

{ "_id" : { "$oid" : "4e6c45b81cb76a67a0363451" }, "name" : "Gautam

Rege", "passion" : [ "Ruby", MongoDB" ]}

exported 1 records

This couldn't be simpler, could it? But wait, there's more. You can export this data into a

CSV le too!

$ mongoexport -d book -c shelf -f name,passion --csv -o test.csv

The preceding command saves data in a CSV le. Similarly, you can export data as a JSON

array too!

$ mongoexport -d book -c shelf --jsonArray

connected to: 127.0.0.1

[{ "_id" : { "$oid" : "4e6c61a05ff70cac810c6996" }, "name" : "Gautam

Rege", "passion" : [ "Ruby", "MongoDB" ] }]

exported 1 records

Chapter 1

[ 25 ]

Importing data using mongoimport

Wasn't this expected? If there is a mongoexport, you must have a mongoimport! Imagine

when you want to import informaon; you can do so in a JSON array, CSV, TSV or plain JSON

format. Simple and sweet!

Managing backup and restore using mongodump and

mongorestore

Backups are important for any database and MongoDB is no excepon. mongodump dumps

the enre database or databases in binary JSON format. We can store this and use this later to

restore it from the backup. This is the closest resemblance to mysqldump! It is done as follows:

$ mongodump -dconfig

connected to: 127.0.0.1

DATABASE: config to dump/config

config.version to dump/config/version.bson

1 objects

config.system.indexes to dump/config/system.indexes.bson

14 objects

...

config.collections to dump/config/collections.bson

1 objects

config.changelog to dump/config/changelog.bson

10 objects

$

$ ls dump/config/

changelog.bson databases.bson mongos.bson system.indexes.bson

chunks.bson lockpings.bson settings.bson version.bson

collections.bson locks.bson shards.bson

Now that we have backed up the database, in case we need to restore it, it is just a maer

of supplying the informaon to mongorestore, which is done as follows:

$ mongorestore -dbkp1 dump/config/

connected to: 127.0.0.1

dump/config/changelog.bson

Installing MongoDB and Ruby

[ 26 ]

going into namespace [bkp1.changelog]

10 objects found

dump/config/chunks.bson

going into namespace [bkp1.chunks]

7 objects found

dump/config/collections.bson

going into namespace [bkp1.collections]

1 objects found

dump/config/databases.bson

going into namespace [bkp1.databases]

15 objects found

dump/config/lockpings.bson

going into namespace [bkp1.lockpings]

5 objects found

...

1 objects found

dump/config/system.indexes.bson

going into namespace [bkp1.system.indexes]

{ key: { _id: 1 }, ns: "bkp1.version", name: "_id_" }

{ key: { _id: 1 }, ns: "bkp1.settings", name: "_id_" }

{ key: { _id: 1 }, ns: "bkp1.chunks", name: "_id_" }

{ key: { ns: 1, min: 1 }, unique: true, ns: "bkp1.chunks", name: "ns_1_

min_1" }

...

{ key: { _id: 1 }, ns: "bkp1.databases", name: "_id_" }

{ key: { _id: 1 }, ns: "bkp1.collections", name: "_id_" }

14 objects found

Saving large les using mongoles

The database should be able to store a large amount of data. Typically, the maximum size of

JSON objects stores 4 MB (and in v1.7 onwards, 16 MB). So, can we store videos and other

large documents in MongoDB? That is where the mongofiles ulity helps.

MongoDB uses GridFS specicaon for storing large les. Language bindings are available to

store large les. GridFS splits larger les into chunks and maintains all the metadata in the

collecon. It's interesng to note that GridFS is just a specicaon, not a mandate and all

MongoDB drivers adhere to this voluntarily.

Chapter 1

[ 27 ]

To manage large les directly in a database, we use the mongofiles ulity.

$ mongofiles -d book -c shelf put /home/gautam/Relax.mov

connected to: 127.0.0.1

added file: { _id: ObjectId('4e6c6f9cc7bd0bf42f31aa3b'), filename:

"/Users/gautam/Relax.mov", chunkSize: 262144, uploadDate: new

Date(1315729317190), md5: "43883ace6022c8c6682881b55e26e745", length:

49120795 }

done!

Noce that 47 MB of data was saved in the database. I wouldn't want to leave you in the

dark, so here goes a lile bit of explanaon. GridFS creates an fs collecon that has two

more collecons called chunks and files. You can retrieve this informaon from MongoDB

from the command line or using Mongo CLI.

$ mongofiles –d book list

connected to: 127.0.0.1

/Users/gautam/Downloads/Relax.mov 49120795

Let's use Mongo CLI to fetch this informaon now. This can be done as follows:

$ mongo

MongoDB shell version: 1.8.3

connecting to: test

> use book

switched to db book

> db.fs.chunks.count()

188

> db.fs.files.count()

1

> db.fs.files.findOne()

{

"_id" : ObjectId("4e6c6f9cc7bd0bf42f31aa3b"),

"filename" : "/Users/gautam/Downloads/Relax.mov",

"chunkSize" : 262144,

Installing MongoDB and Ruby

[ 28 ]

"uploadDate" : ISODate("2011-09-11T08:21:57.190Z"),

"md5" : "43883ace6022c8c6682881b55e26e745",

"length" : 49120795

}

>

bsondump

This is a ulity that helps analyze BSON dumps. For example, if you want to lter all the

objects from a BSON dump of the book database, you could run the following command:

$ bsondump --filter "{name:/Rege/}" dump/book/shelf.bson

This command would analyze the enre dump and get all the objects where name has the

specied value in it! The other very nice feature of bsondump is if we have a corrupted dump

during any restore, we can use the objcheck ag to ignore all the corrupt objects.

Installing Rails/Sinatra

Considering that we aim to do web development with Ruby and MongoDB, Rails or Sinatra

cannot be far behind.

Rails 3 packs a punch. Sinatra was born because Rails 2.x was a really

heavy framework. However, Rails 3 has Metal that can be congured

with only what we need in our applicaon framework. So Rails 3 can be

as lightweight as Sinatra and also get the best of the support libraries.

So Rails 3 it is, if I have to choose between Ruby web frameworks!

Installing Rails 3 or Sinatra is as simple as one command, as follows:

$ gem install rails

$ gem install sinatra

At the me of wring this chapter, Rails 3.2 had just been released in

producon mode. That is what we shall use!

Chapter 1

[ 29 ]

Summary

What we have learned so far is about geng comfortable with Ruby and MongoDB. We

installed Ruby using RVM, learned a lile about rbenv and then installed MongoDB. We saw

how to congure MongoDB, start it, stop it, and nally we played around with the various

MongoDB ulies to dump informaon, restore it, save large les and even export to CSV

or JSON.

In the next chapter, we shall dive deep into MongoDB. We shall learn how to work with

documents, save them, fetch them, and search for them — all this using the mongo ulity.

We shall also see a comparison with SQL databases.

2

Diving Deep into MongoDB

Now that we have seen the basic les and CLI ulies available with MongoDB,

we shall now use them. We shall see how these objects are modeled via Mongo

CLI as well as from the Ruby console.

In this chapter we shall learn the following:

Modeling the applicaon data.

Mapping it to MongoDB objects.

Creang embedded and relaonal objects.

Fetching objects.

How does this dier from the SQL way?

Take a brief look at a Map/Reduce, with an example.

We shall start modeling an applicaon, whereby we shall learn various constructs of

MongoDB and then integrate it into Rails and Sinatra. We are going to build the Sodibee

(pronounced as |saw-d-bee|) Library Manager.

Books belong to parcular categories including Fiction, Non-fiction, Romance,

Self-learning, and so on. Books belong to an author and have one publisher.

Books can be leased or bought. When books are bought or leased, the customer's details

(such as name, address, phone, and e-mail) are registered along with the list of books

purchased or leased.

Diving Deep into MongoDB

[ 32 ]

An inventory maintains the quanty of each book with the library, the quanty sold and the

number of mes it was leased.

Over the course of this book, we shall evolve this applicaon into a full-edged web

applicaon powered by Ruby and MongoDB. In this chapter we will learn the various

constructs of MongoDB.

Creating documents

Let's rst see how we can create documents in MongoDB. As we have briey seen, MongoDB

deals with collecons and documents instead of tables and rows.

Time for action – creating our rst document

Suppose we want to create the book object having the following schema:

book = {

name: "Oliver Twist",

author: "Charles Dickens",

publisher: "Dover Publications",

published_on: "December 30, 2002",

category: ['Classics', 'Drama']

}

Downloading the example code

You can download the example code les for all Packt books you have

purchased from your account at http://www.packtpub.com. If you

purchased this book elsewhere, you can visit http://www.packtpub.

com/support and register to have the les e-mailed directly to you.

On the Mongo CLI, we can add this book object to our collecon using the following command:

> db.books.insert(book)

Suppose we also add the shelf collecon (for example, the oor, the row, the column the

shelf is in, the book indexes it maintains, and so on that are part of the shelf object), which

has the following structure:

shelf : {

name : 'Fiction',

location : { row : 10, column : 3 },

floor : 1

lex : { start : 'O', end : 'P' },

}

Chapter 2

[ 33 ]

Remember, it's quite possible that a few years down the line, some shelf instances may

become obsolete and we might want to maintain their record. Maybe we could have another

shelf instance containing only books that are to be recycled or donated. What can we do?

We can approach this as follows:

The SQL way: Add addional columns to the table and ensure that there is a default

value set in them. This adds a lot of redundancy to the data. This also reduces the

performance a lile and considerably increases the storage. Sad but true!

The NoSQL way: Add the addional elds whenever you want. The following are the

MongoDB schemaless object model instances:

> db.book.shelf.find()

{ "_id" : ObjectId("4e81e0c3eeef2ac76347a01c"), "name" : "Fiction",

"location" : { "row" : 10, "column" : 3 }, "floor" : 1 }

{ "_id" : ObjectId("4e81e0fdeeef2ac76347a01d"), "name" : "Romance",

"location" : { "row" : 8, "column" : 5 }, "state" : "window broken",