SMI Tech Guide 2015

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 180 [warning: Documents this large are best viewed by clicking the View PDF Link!]

Technical Guide

An Assessment of College and Career Math

Readiness Across Grades K–Algebra ll

Technical Guide

Common Core State Standards copyright © 2010 National Governors Association Center for Best Practices and Council of Chief State School Officers.

All rights reserved.

Excepting those parts intended for classroom use, no part of this publication may be reproduced in whole or in part, or stored in a retrieval system, or transmitted

in any form or by any means, electronic, mechanical, photocopying, recording, or otherwise, without written permission of the publisher. For information regarding

permission, write to Scholastic Inc., 557 Broadway, New York, NY 10012. Scholastic Inc. grants teachers who have purchased SMI College & Career permission to

reproduce from this book those pages intended for use in their classrooms. Notice of copyright must appear on all copies of copyrighted materials.

Copyright © 2014, 2012, 2011 by Scholastic Inc.

All rights reserved. Published by Scholastic Inc.

ISBN-13: 978-0-545-79640-8

ISBN-10: 0-545-79640-7

SCHOLASTIC, SCHOLASTIC ACHIEVEMENT MANAGER, and associated logos are trademarks and/or registered trademarks of Scholastic Inc.

QUANTILE, QUANTILE FRAMEWORK, LEXILE, and LEXILE FRAMEWORK are registered trademarks of MetaMetrics, Inc.

Other company names, brand names, and product names are the property and/or trademarks of their respective owners.

2 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Table of Contents

Introduction ...................................................................................7

Features of Scholastic Math Inventory College & Career ........................................9

Rationale for and Uses of Scholastic Math Inventory College & Career ..........................10

Limitations of Scholastic Math Inventory College & Career .....................................13

Theoretical Foundations and Validity of the Quantile Framework for Mathematics .............15

The Quantile Framework for Mathematics Taxonomy ..........................................17

The Quantile Framework Field Study .........................................................22

The Quantile Scale .........................................................................31

Validity of the Quantile Framework for Mathematics ...........................................36

Relationship of Quantile Framework to Other Measures of Mathematics Understanding...........37

Using SMI College & Career...................................................................43

Administering the Test ......................................................................45

Interpreting Scholastic Math Inventory College & Career Scores ................................52

Using SMI College & Career Results..........................................................61

SMI College & Career Reports to Support Instruction ..........................................62

Development of SMI College & Career.........................................................65

Specifications of the SMI College & Career Item Bank .........................................66

SMI College & Career Item Development .....................................................69

SMI College & Career Computer-Adaptive Algorithm ...........................................74

Reliability ....................................................................................81

QSC Quantile Measure—Measurement Error .................................................82

SMI College & Career Standard Error of Measurement .........................................83

Validity.......................................................................................87

Content Validity ............................................................................89

Construct-Identification Validity..............................................................90

Conclusion.................................................................................91

References ...................................................................................93

Appendices ..................................................................................99

Appendix 1: QSC Descriptions and Standards Alignment ..................................... 100

Appendix 2: Norm Reference Table (spring percentiles) ...................................... 131

Appendix 3: Reliability Studies............................................................. 132

Appendix 4: Validity Studies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 136

Table of Contents

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Table of Contents

Table of Contents 3

Figures

FIGURE 1 ........................................11

Growth Report.

FIGURE 2 ........................................28

Rasch achievement estimates of N = 9,656 students with

complete data.

FIGURE 3 ........................................29

Box and whisker plot of the Rasch ability estimates (using

the Quantile scale) for the final sample of students with

outfit statistics less than 1.8 (N = 9,176).

FIGURE 4 ........................................30

Box and whisker plot of the Rasch ability estimates (using

the Quantile scale) of the 685 Quantile Framework items for

the final sample of students (N = 9,176).

FIGURE 5 ........................................32

Relationship between reading and mathematics scale scores

on a norm-referenced assessment linked to the Lexile scale

in reading.

FIGURE 6 ........................................33

Relationship between grade level and mathematics

performance on the Quantile Framework field study and

other mathematics assessments.

FIGURE 7 ........................................41

A continuum of mathematical demand for Kindergarten

through precalculus textbooks (box plot percentiles: 5th,

25th, 50th, 75th, and 95th).

FIGURE 8 ........................................46

SMI College & Career’s four-function calculator and scientific

calculator.

FIGURE 9 ........................................47

SMI College & Career Grades 3–5 Formula Sheet.

FIGURE 10.......................................47

SMI College & Career Grades 6–8 Formula Sheet.

FIGURE 11.......................................48

SMI College & Career Grades 9–11 Formula Sheets.

FIGURE 12.......................................51

Sample administration of SMI College & Career for a fourth-

grade student.

FIGURE 13.......................................53

Normal distribution of scores described in percentiles,

stanines, and NCEs.

FIGURE 14.......................................57

Student-mathematical demand discrepancy and predicted

success rate.

FIGURE 15.......................................63

Instructional Planning Report.

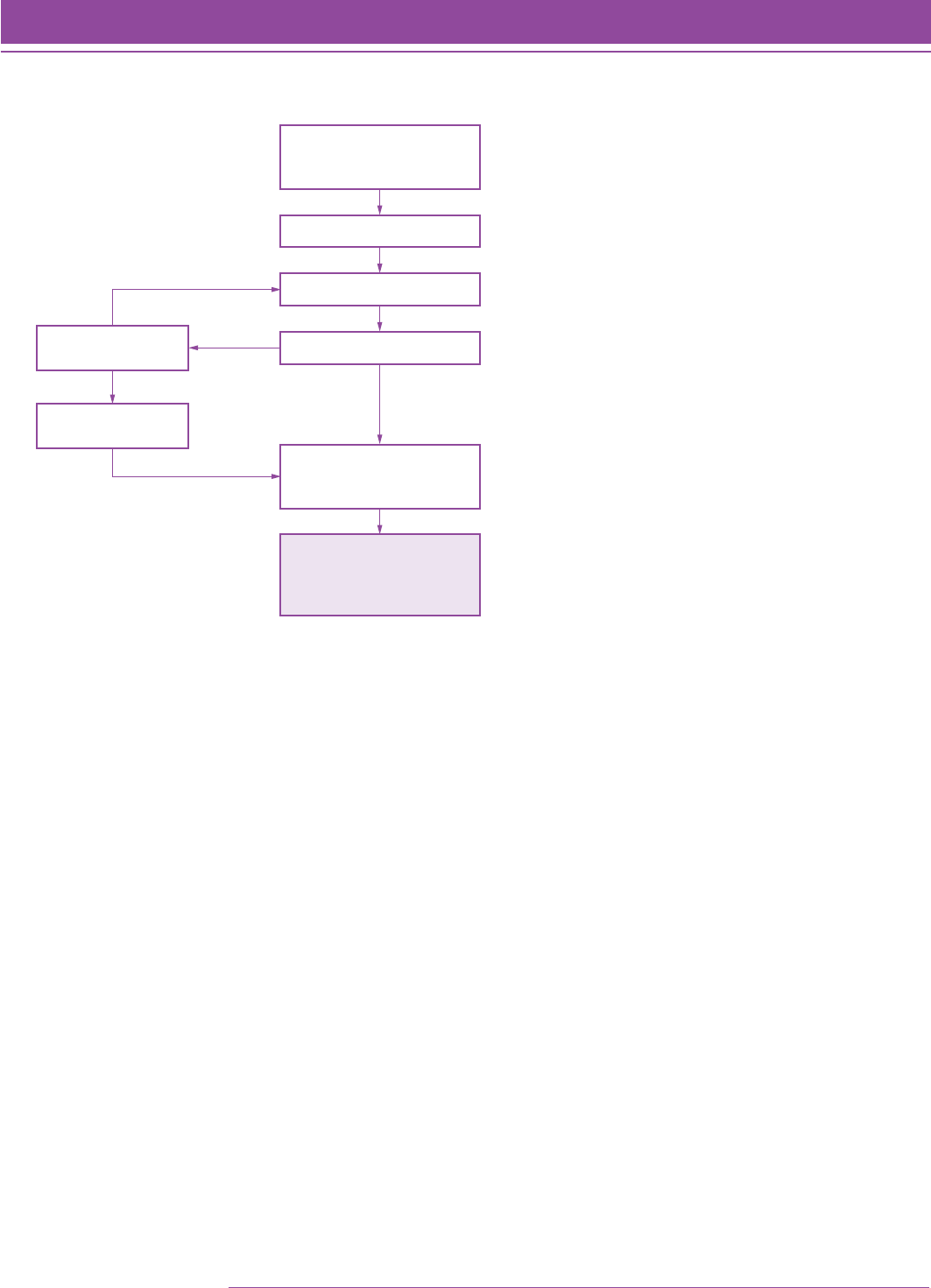

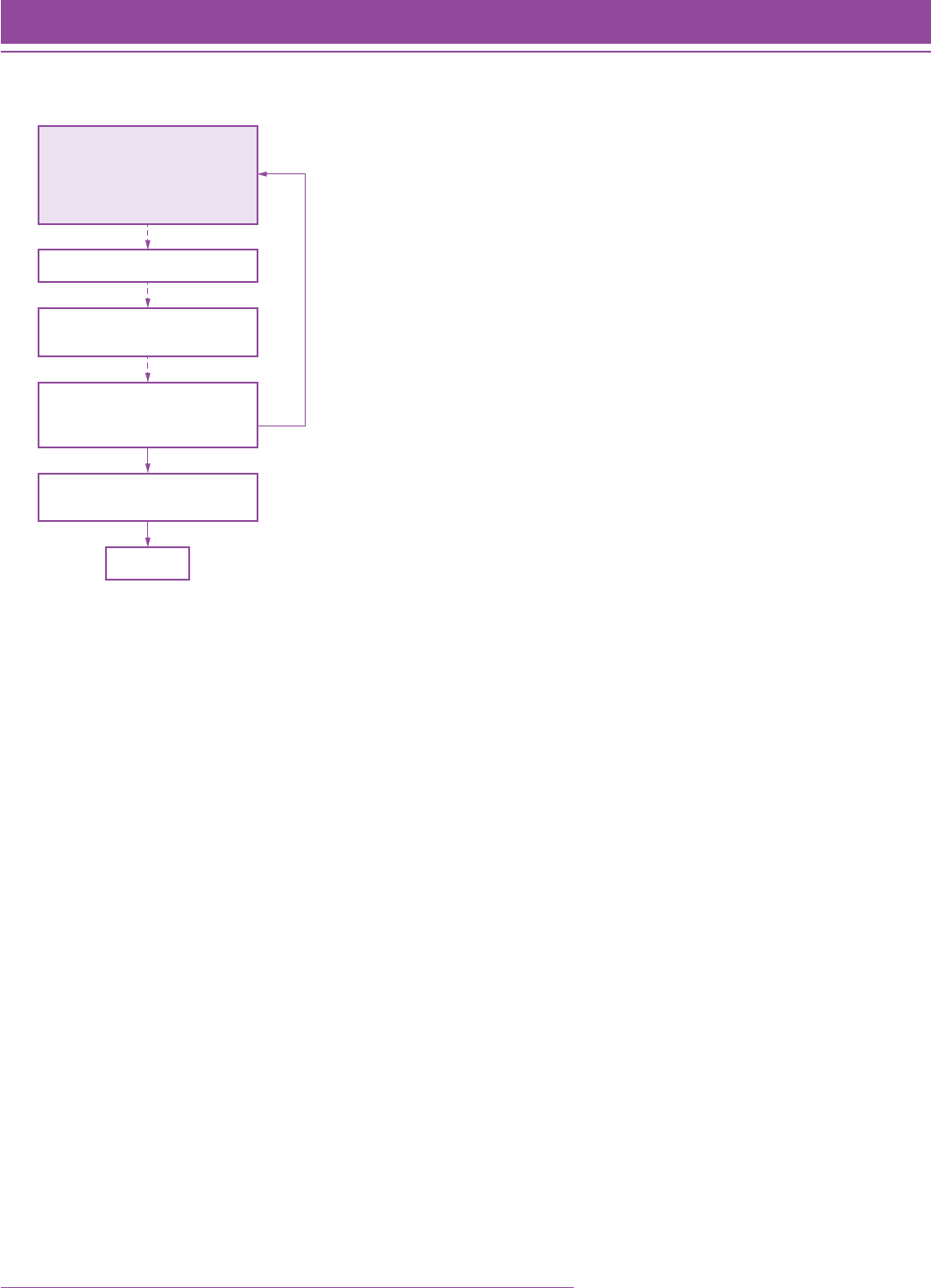

FIGURE 16.......................................76

The Start phase of the SMI College & Career computer-

adaptive algorithm.

FIGURE 17.......................................78

The Step phase of the SMI College & Career computer-

adaptive algorithm.

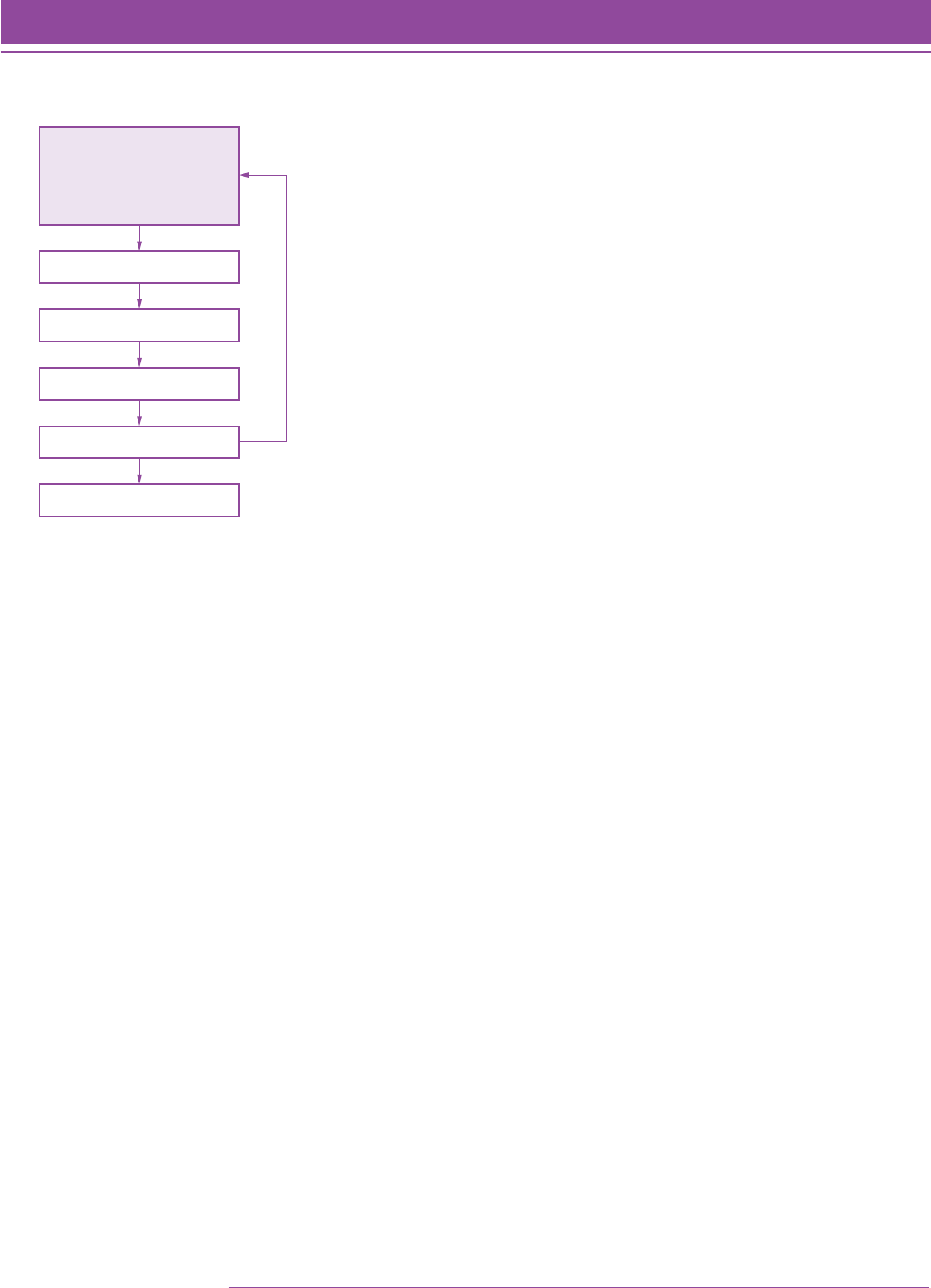

FIGURE 18.......................................79

The Stop phase of the SMI College & Career computer-

adaptive algorithm.

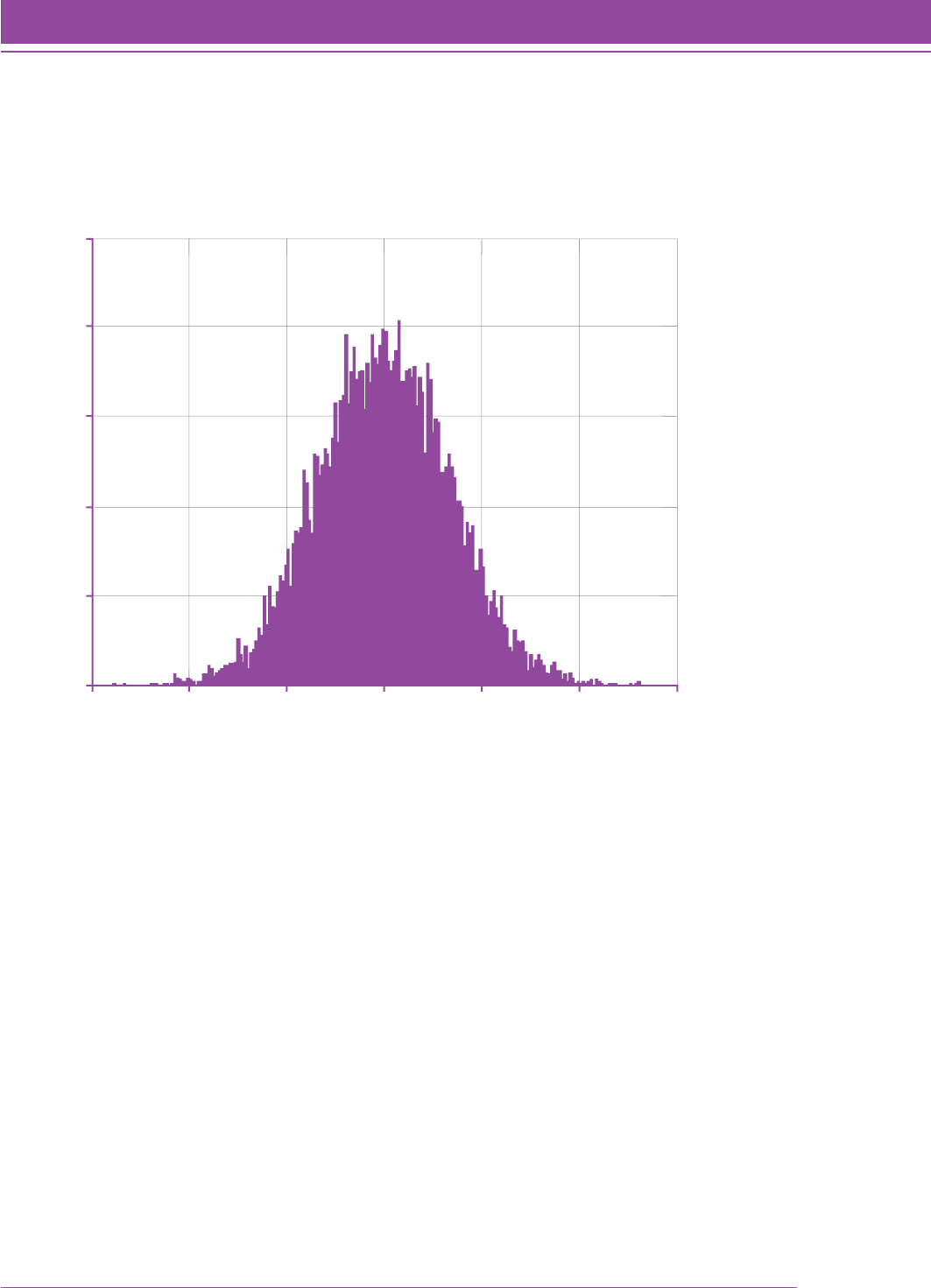

FIGURE 19.......................................85

Distribution of SEMs from simulations of student

SMI College & Career scores, Grade 5.

FIGURE 20......................................156

SMI Validation Study, Phase I: SMI Quantile measures

displayed by location and grade.

FIGURE 21......................................157

SMI Validation Study, Phase II: SMI Quantile measures

displayed by location and grade.

FIGURE 22......................................157

SMI Validation Study, Phase II: SMI Quantile measures

displayed by grade.

FIGURE 23......................................158

SMI Validation Study, Phase III: SMI Quantile measures

displayed by location and grade.

FIGURE 24......................................158

SMI Validation Study, Phase III: SMI Quantile measures

displayed by grade.

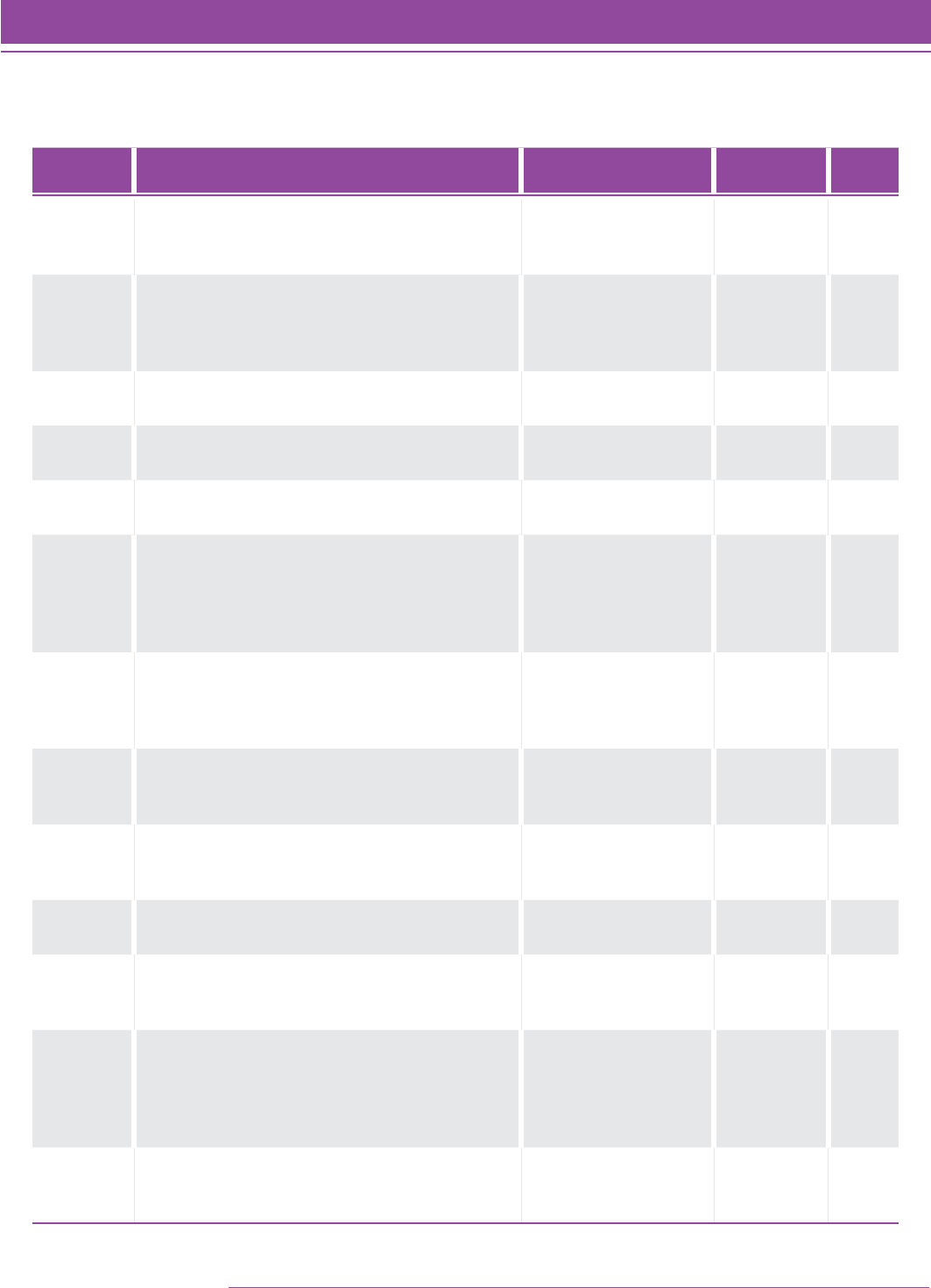

Tables

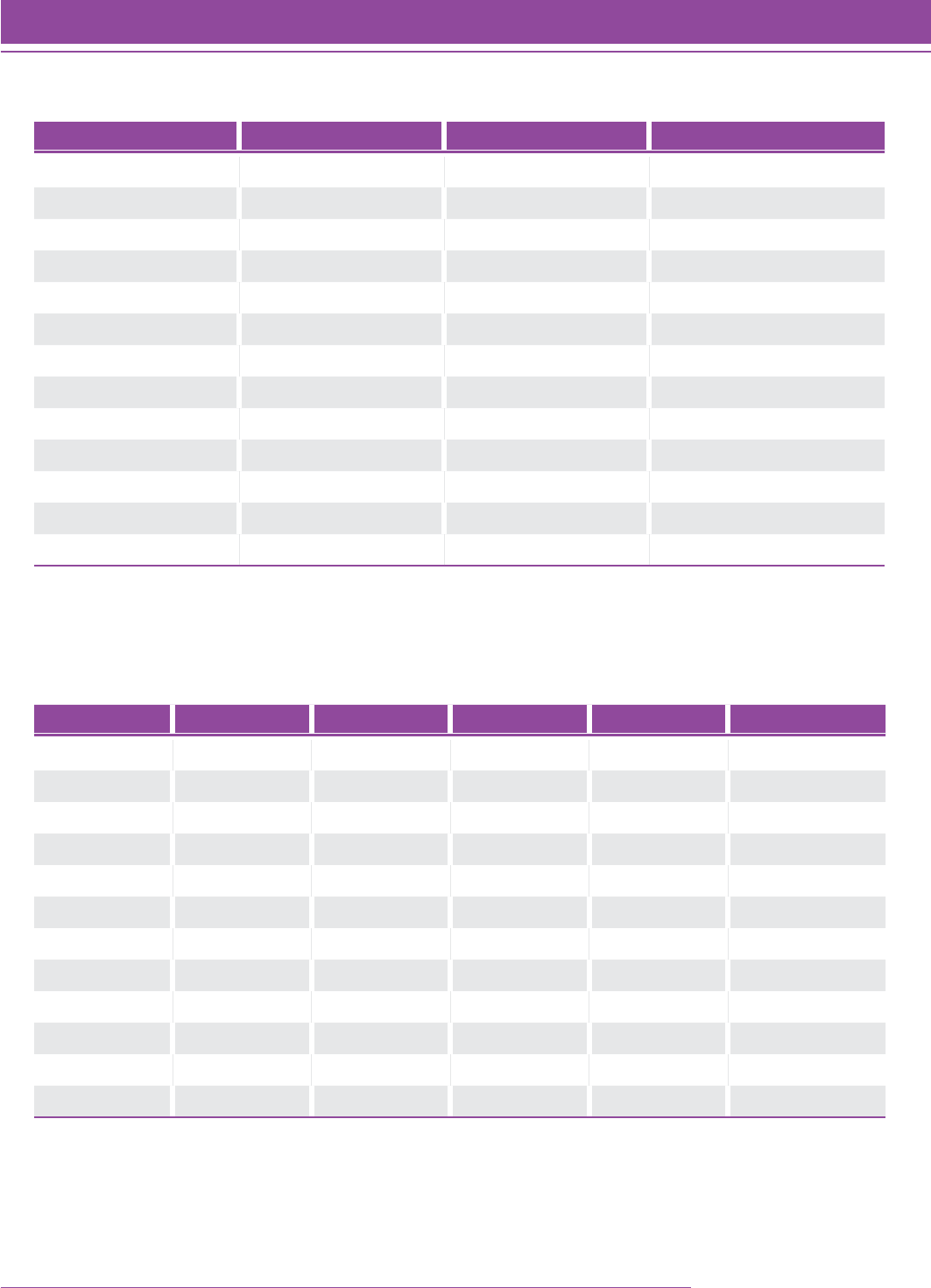

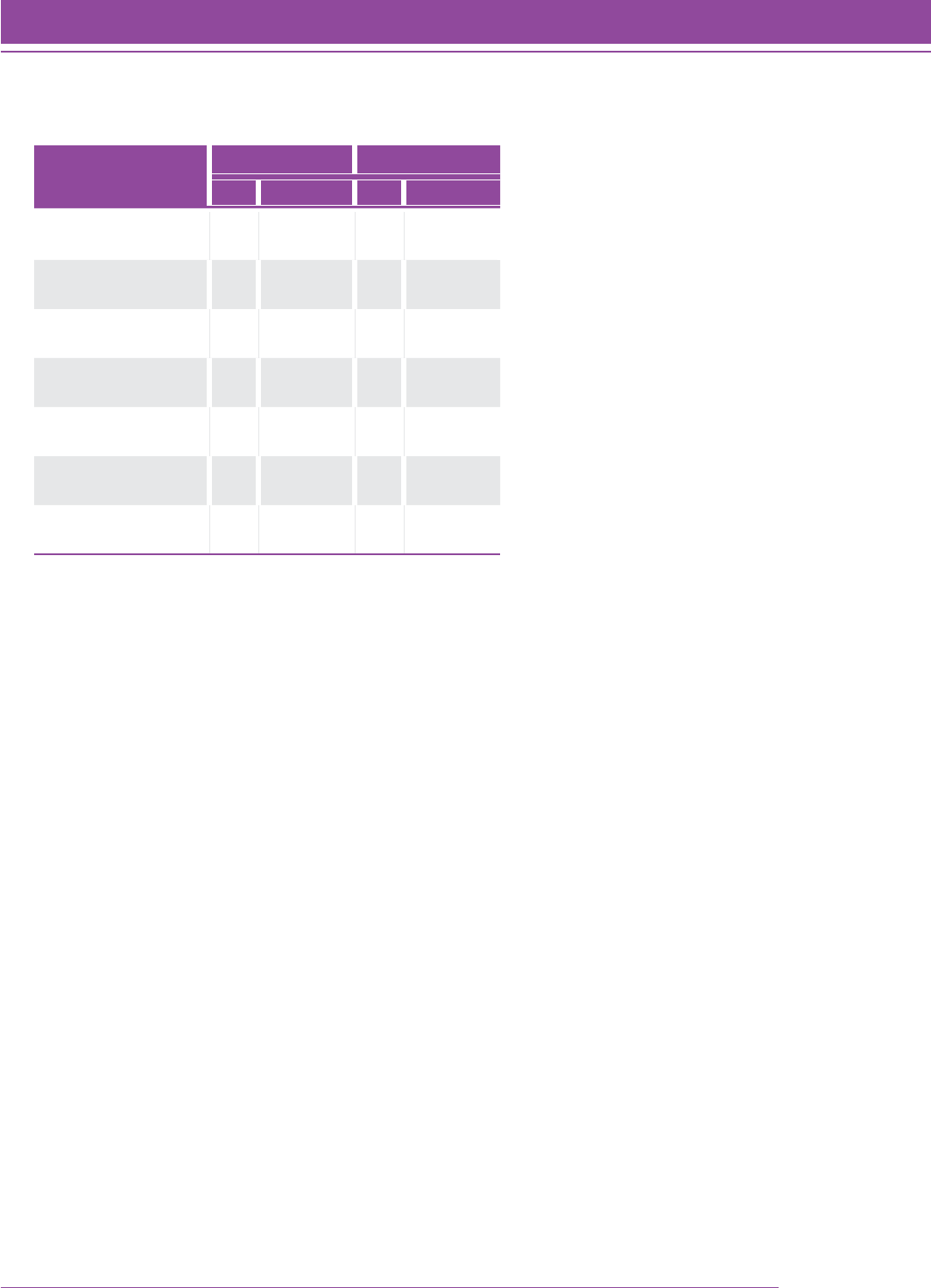

TABLE 1.........................................23

Field study participation by grade and gender.

TABLE 2.........................................23

Test form administration by level.

TABLE 3.........................................24

Summary item statistics from the Quantile Framework

field study.

TABLE 4.........................................27

Mean and median Quantile measure for N 5 9,656

students with complete data.

TABLE 5.........................................29

Mean and median Quantile measure for the final set of

N 5 9,176 students.

TABLE 6.........................................37

Results from field studies conducted with the Quantile

Framework.

TABLE 7.........................................38

Results from linking studies conducted with the Quantile

Framework.

TABLE 8.........................................58

Success rates for a student with a Quantile measure of 750Q

and skills of varying difficulty (demand).

List of Figures and Tables

4 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Table of Contents

TABLE 9.........................................58

Success rates for students with different Quantile measures

of achievement for a task with a Quantile measure of 850Q.

TABLE 10........................................60

SMI College & Career performance level ranges by grade.

TABLE 11........................................67

Designed strand profile for SMI: Kindergarten through

Grade 11 (Algebra II).

TABLE 12........................................73

Actual strand profile for SMI after item writing and review.

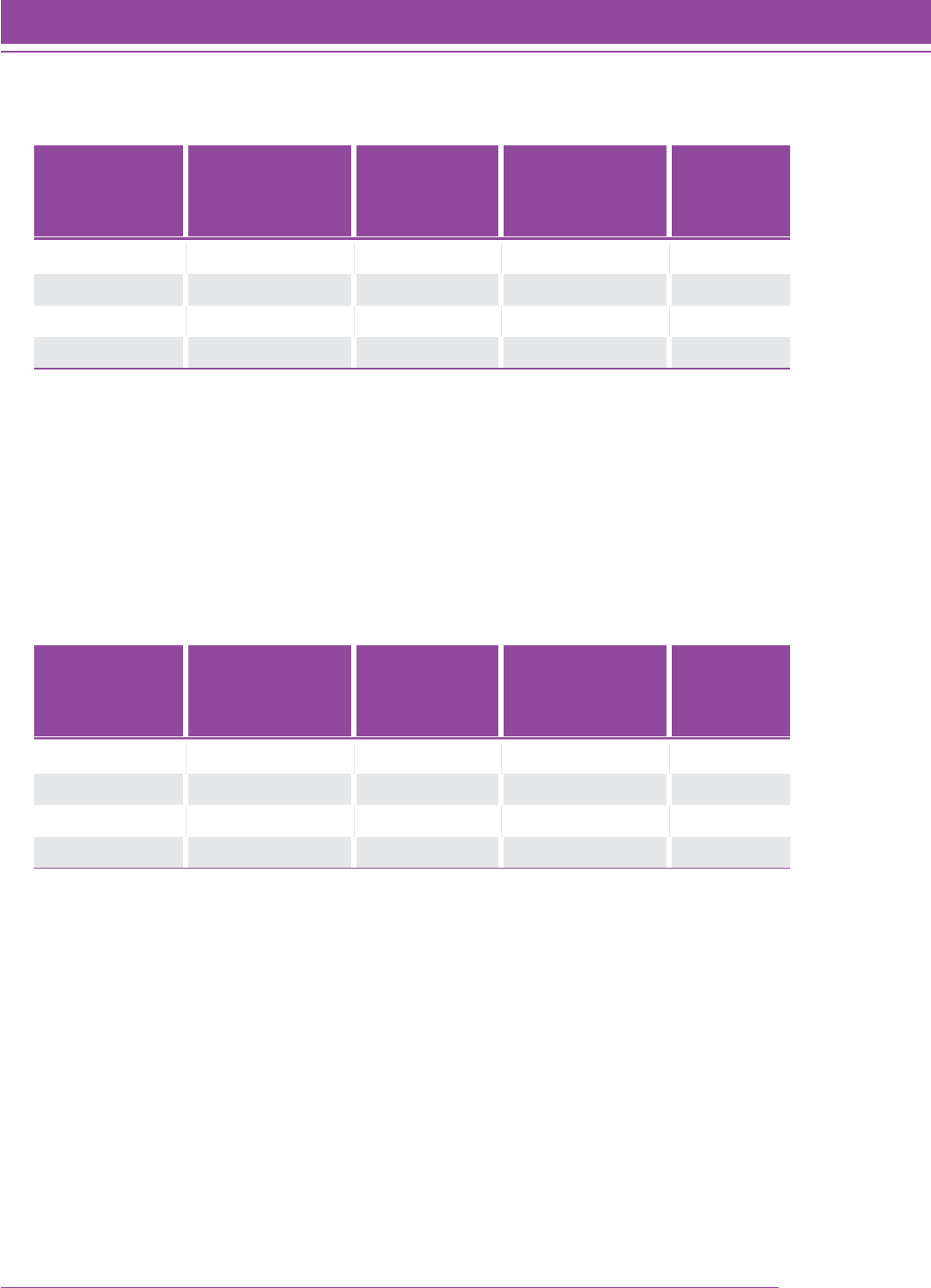

TABLE 13.......................................134

SMI Marginal reliability estimates, by district and overall.

TABLE 14.......................................135

SMI test-retest reliability estimates over a one-week period,

by grade.

TABLE 15.......................................139

SMI Validation Study—Phase I: Descriptive statistics for

the SMI Quantile measures.

TABLE 16.......................................140

SMI Validation Study—Phase I: Means and standard

deviations for the SMI Quantile measures, by gender.

TABLE 17.......................................140

SMI Validation Study—Phase I: Means and standard

deviations for the SMI Quantile measures, by race/ethnicity.

TABLE 18.......................................143

SMI Validation Study—Phase II: Descriptive statistics for the

SMI Quantile measures (spring administration).

TABLE 19.......................................144

SMI Validation Study—Phase II: Means and standard

deviations for the SMI Quantile measures, by gender

(spring administration).

TABLE 20.......................................145

SMI Validation Study—Phase II: Means and standard

deviations for the SMI Quantile measures, by race/ethnicity

(spring administration).

TABLE 21.......................................147

SMI Validation Study—Phase III: Descriptive statistics for

the SMI Quantile measures (spring administration).

TABLE 22.......................................149

SMI Validation Study—Phase III: Means and standard

deviations for the SMI Quantile measures, by gender

(spring administration).

TABLE 23.......................................150

SMI Validation Study—Phase III: Means and standard

deviations for the SMI Quantile measures, by race/ethnicity

(spring administration).

TABLE 24.......................................152

Harford County Public Schools—Intervention study means

and standard deviation for the SMI Quantile measures.

TABLE 25.......................................152

Raytown Consolidated School District No. 2—FASTT Math

intervention program participation means and standard

deviations for the SMI Quantile measures.

TABLE 26.......................................153

Inclusion in math intervention program means and

standard deviations for the SMI Quantile measures.

TABLE 27.......................................153

Gifted and Talented status means and standard deviations

for the SMI Quantile measures.

TABLE 28.......................................154

Gifted and Talented status means and standard deviations

for the SMI Quantile measures.

TABLE 29.......................................155

Special Education status means and standard deviations

for the SMI Quantile measures.

TABLE 30.......................................155

Special Education status means and standard deviations

for the SMI Quantile measures.

TABLE 31.......................................159

Correlations among the Decatur School District test scores.

TABLE 32.......................................159

Correlations between SMI Quantile measures and Harford

County Public Schools test scores.

TABLE 33.......................................160

Alief Independent School District—descriptive statistics for

SMI Quantile measures and 2010 TAKS mathematics scores,

by grade.

TABLE 34.......................................161

Alief Independent School District—descriptive statistics for

SMI Quantile measures and 2011 TAKS mathematics scores,

by grade.

TABLE 35.......................................162

Brevard Public Schools—descriptive statistics for SMI Quantile

measures and 2010 FCAT mathematics scores, by grade.

TABLE 36.......................................162

Brevard Public Schools—descriptive statistics for SMI Quantile

measures and 2011 FCAT mathematics scores, by grade.

TABLE 37.......................................163

Cabarrus County Schools—descriptive statistics for SMI

Quantile measures and 2010 NCEOG mathematics scores, by

grade.

TABLE 38.......................................163

Cabarrus County Schools—descriptive statistics for SMI

Quantile measures and 2011 NCEOG mathematics scores, by

grade.

TABLE 39.......................................164

Clark County School District—descriptive statistics for SMI

Quantile measures and 2010 CRT mathematics scores, by

grade.

List of Figures and Tables (continued)

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Table of Contents 5

Table of Contents

TABLE 40.......................................164

Clark County School District—descriptive statistics for SMI

Quantile measures and 2011 CRT mathematics scores, by grade.

TABLE 41.......................................165

Harford County Public Schools—descriptive statistics for SMI

Quantile measures and 2010 MSA mathematics scores, by

grade.

TABLE 42.......................................165

Harford County Public Schools—descriptive statistics for SMI

Quantile measures and 2011 MSA mathematics scores, by

grade.

TABLE 43.......................................166

Kannapolis City Schools—descriptive statistics for SMI

Quantile measures and 2010 NCEOG mathematics scores, by

grade.

TABLE 44.......................................166

Kannapolis City Schools—descriptive statistics for SMI

Quantile measures and 2011 NCEOG mathematics scores, by

grade.

TABLE 45.......................................167

Killeen Independent School District—descriptive statistics for

SMI Quantile measures and 2011 TAKS mathematics scores,

by grade.

TABLE 46.......................................168

Description of longitudinal panel across districts, by grade.

TABLE 47.......................................168

Description of longitudinal panel across districts, by grade

for students with at least three Quantile measures.

TABLE 48.......................................169

Results of regression analyses for longitudinal panel,

across grades.

TABLE 49.......................................172

Alief Independent School District—bilingual means and

standard deviations for the SMI Quantile measures.

TABLE 50.......................................172

Cabarrus County Schools—English language learners (ELL)

means and standard deviations for the SMI Quantile

measures.

TABLE 51.......................................173

Alief ISD—English as a second language (ESL) means and

standard deviations for the SMI Quantile measures.

TABLE 52.......................................173

Alief ISD—limited English proficiency (LEP) status means

and standard deviations for the SMI Quantile measures.

TABLE 53.......................................173

Clark County School District—limited English proficiency

(LEP) status means and standard deviations for the SMI

Quantile measures.

TABLE 54.......................................173

Kannapolis City Schools—limited English proficiency (LEP)

status means and standard deviations for the SMI Quantile

measures.

TABLE 55.......................................174

Harford County Public Schools—English language learners

(ELL) means and standard deviations for the SMI Quantile

measures.

TABLE 56.......................................174

Kannapolis City Schools—limited English proficiency (LEP)

means and standard deviations for the SMI Quantile

measures.

TABLE 57.......................................174

Killeen ISD—limited English proficiency (LEP) means and

standard deviations for the SMI Quantile measures.

TABLE 58.......................................175

Alief ISD—Economically disadvantaged means and standard

deviations for the SMI Quantile measures.

TABLE 59.......................................175

Harford County Public Schools—Economically disadvantaged

means and standard deviations for the SMI Quantile

measures.

TABLE 60.......................................176

Killeen Independent School District—Economically

disadvantaged means and standard deviations for the SMI

Quantile measures.

TABLE 61.......................................177

Alief Independent School District—descriptive statistics for

SMI Quantile measures and 2010 TAKS reading scores, by

grade.

TABLE 62.......................................177

Brevard Public Schools—descriptive statistics for SMI

Quantile measures and 2010 FCAT reading scores, by grade.

TABLE 63.......................................178

Cabarrus County Schools—descriptive statistics for SMI

Quantile measures and 2010 NCEOG reading scores, by

grade.

TABLE 64.......................................178

Kannapolis City Schools—descriptive statistics for SMI

Quantile measures and 2010 NCEOG reading scores,

by grade.

TABLE 65.......................................179

Clark County School District—descriptive statistics for SMI

Quantile measures and 2010 CRT reading scores, by grade.

TABLE 66.......................................179

Alief Independent School District—descriptive statistics for

SMI Quantile measures and 2011 TAKS reading scores, by

grade.

8 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Introduction

Introduction

Scholastic Math InventoryTM College & Career, developed by Scholastic Inc., is an objective assessment of a

student’s readiness for mathematics instruction from Kindergarten through Algebra II (or High School Integrated

Math III), which is commonly considered an indicator of college and career readiness. SMI College & Career

quantifies a student’s path to and through high school mathematics and can be administered to students in Grades

K–12. A computer-adaptive test, SMI College & Career delivers test items targeted to students’ ability level. The

measurement system for SMI College & Career is the Quantile® Framework for Mathematics and, while SMI College

& Career can be used for several instructional purposes, a completed SMI College & Career administration yields a

Quantile® measure for each student. Teachers and administrators can use the students’ Quantile measures to:

• Conduct universal screening: identify the degree to which students are ready for instruction on certain

mathematical concepts and skills

• Differentiate instruction: provide targeted support for students at their readiness level

• Monitor growth: gauge students’ developing understandings of mathematics in relation to the objective

measure of algebra readiness and Algebra II completion and to being on track for college and career at the

completion of high school

The Quantile Framework for Mathematics, developed by MetaMetrics, Inc., is a scientifically based scale of

mathematics skills and concepts. The Quantile Framework helps educators measure student progress as well as

forecast student development by providing a common metric for mathematics concepts and skills and for students’

abilities. A Quantile measure refers to both the level of difficulty of the mathematical skills and concepts and a

student’s readiness for instruction.

SMI was developed during 2008–2010 and launched in Summer 2010. Additional items were added in 2011 and

again in 2013. Studies of SMI validity began in 2009. For a more robust validity analysis, Phase II of the SMI

validation study was conducted during the 2009–2010 school year. Phase III of the validity study was completed in

2012 with data collected from the 2010–2011 school year.

This technical guide is intended to provide users with the broad research foundation essential for deciding how SMI

College & Career should be administered and what kinds of inferences can be drawn from the results pertaining

to students’ mathematical achievement. In addition, this guide describes the development and psychometric

characteristics of this assessment and the Quantile Framework.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Introduction 9

Introduction

Features of Scholastic Math Inventory College & Career

SMI College & Career is a research-based universal screener and growth monitor designed to measure students’

readiness for instruction. The assessment is computer-based and features two components:

• The actual student test, which is computer adaptive, with more than 5,000 items

• The management system, Scholastic Achievement Manager, where enrollments are managed and

customized features and data reports can be accessed

SMI College & Career Test

When students are assessed with SMI College & Career, they receive a single measure—a Quantile measure—that

indicates their instructional readiness for calibrated content to and through Algebra II/High School Integrated Math

III. As a computer-adaptive test, SMI College & Career provides items based on students’ previous responses. This

format allows students to be tested on a large range of skills and concepts in a shorter amount of time and yields a

highly accurate score.

SMI College & Career can be completed in 20–40 minutes and is presented in three parts: Math Fact Screener (for

Kindergarten and Grade 1 an Early Numeracy Screener, which tests students on counting and quantity comparison,

is used), Practice Test, and Scored Test.

The Math Fact Screener is a simple and fast test focused on basic addition and multiplication skills. Once students

demonstrate relative mastery of math facts, they will not experience this part of the assessment again.

The Practice Test is a three- to five-question test calibrated far below the students’ current grade level. The purpose

of this part is to ensure students can interact with a computer test successfully and to provide them an opportunity

to practice with the tools provided in the assessment. Teachers can allow students to skip this part of the test after

its first administration.

The Scored Test part of the assessment produces Quantile measures for the students. In this part, students engage

with at least 25 and as many as 45 test items that follow a consistent four-option, multiple-choice format. Items are

presented in a simple, straightforward format with clear unambiguous language. Because of the depth of the item

bank, students do not see the same item twice in the same administration or in the next two administrations. Items

at the lowest level are sampled from Kindergarten and Grade 1 mathematical skills and topics; items at the highest

level are sampled from Algebra II/High School Integrated Math III topics. Some items may include mathematical

representations such as diagrams, graphs, tables, and charts. Optional calculators and formula sheets are included

in the program. Calculators will not be visible for problems whose purpose is computational proficiency. Providing

students with paper and pencil during their assessment is recommended. All assessments can be saved and

accessed at another time for completion. This feature is important for students with extended time accommodations.

10 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Introduction

Scholastic Achievement Manager

Scholastic Achievement Manager (SAM) is the data backbone for all Scholastic technology programs, including SMI

College & Career. In SAM, educators can manage enrollment, create classes, and assign usernames and passwords.

SAM also provides teachers and administrators with nine template reports that support this formative assessment

with actionable data for teachers, students, parents, and administrators. These reports feature a growth report that

provides a quantifiable trajectory to and through Algebra II/High School Integrated Math III—a course cited as the

gatekeeper to college and career readiness—and provide a tool to differentiate math instruction by linking Quantile

measures to classroom resources and basal math textbooks.

There are over 5,000 test items that were rigorously developed to connect to a Quantile measure. When students are

tested with SMI College & Career, they are tested on items that represent five content strands (Number & Operations;

Algebraic Thinking, Patterns, and Proportional Reasoning; Geometry, Measurement, and Data; Statistics & Probability

[Grades 6–11 only]; and Expressions & Equations, Algebra, and Functions [Grades 6–11 only]) and receive a single

measure—a Quantile measure—that indicates their instructional readiness for calibrated content.

Scholastic Central

Scholastic Central puts your assessment calendar, data snapshots and news regarding student performance and

usage, instructional recommendations, and professional learning resources all in one centralized location to make it

easy to assess and plan instruction.

Leadership Dashboard

Administrators access the Leadership Dashboard on Scholastic Central to view high-level data snapshots and data

analytics for the schools using SMI College & Career. Follow up with individual schools for appropriate intervention to

increase student performance to proficiency.

You can use the Leadership Dashboard to:

• View Performance Level and Growth data snapshots, with pop-up information and a table of detailed data

by school

• Filter school- and district-level data by demographics

• View resources for Implementation Success Factors

• Schedule and view reports

Rationale for and Uses of Scholastic Math Inventory College & Career

A comprehensive program of mathematics education includes curriculum, assessment, and instruction.

• Curriculum is the planned set of standards and materials that are intended for implementation during the

academic year.

• Instruction is the enacting of curriculum; it is when students build new concepts and skills.

• Assessment should inform instruction by describing skills and concepts students are ready to learn.

The challenge for educators is meeting the demand of curriculum in a classroom of diverse learners. This challenge

is met when the curriculum unfolds in a way that all students make progress. Typically, the best starting point for

instruction is to identify the skills and concepts that each student is ready to learn.

SMI College & Career provides educators with information related to the difficulty of skills and concepts, as well as

the increasing difficulty of content progressions. This information is found in the SMI skills and concepts database at

www.scholastic.com/SMI.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Introduction 11

Introduction

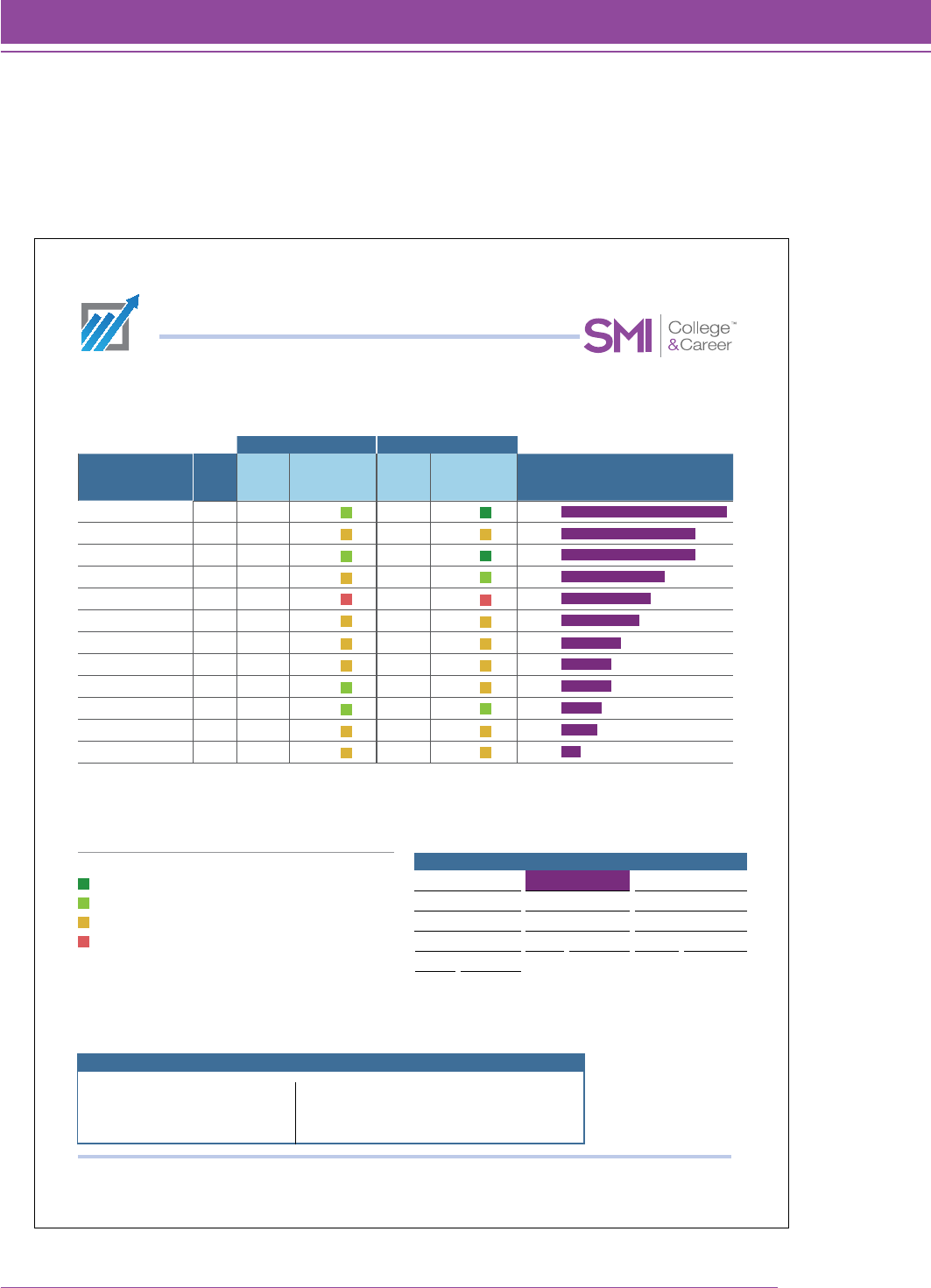

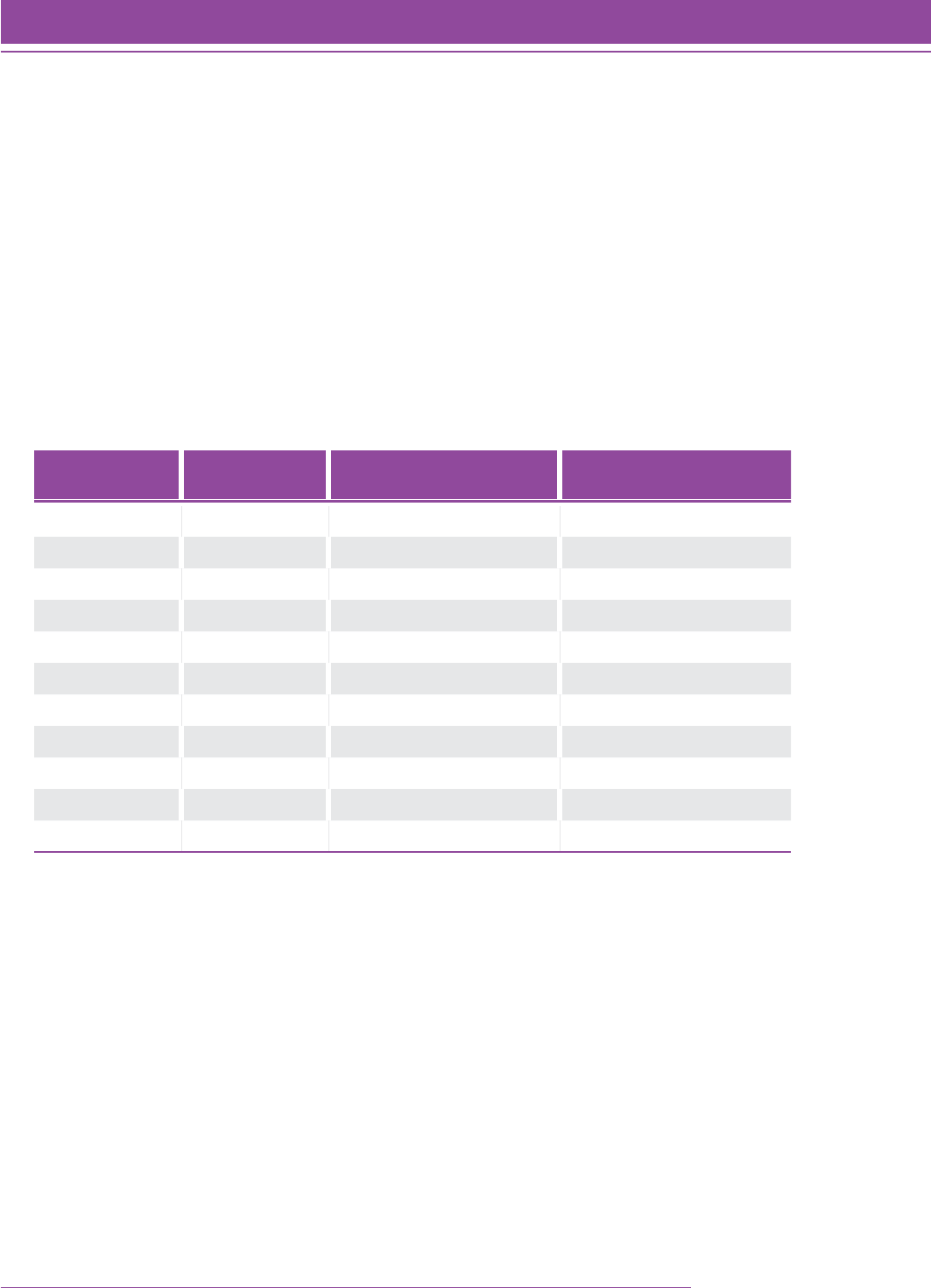

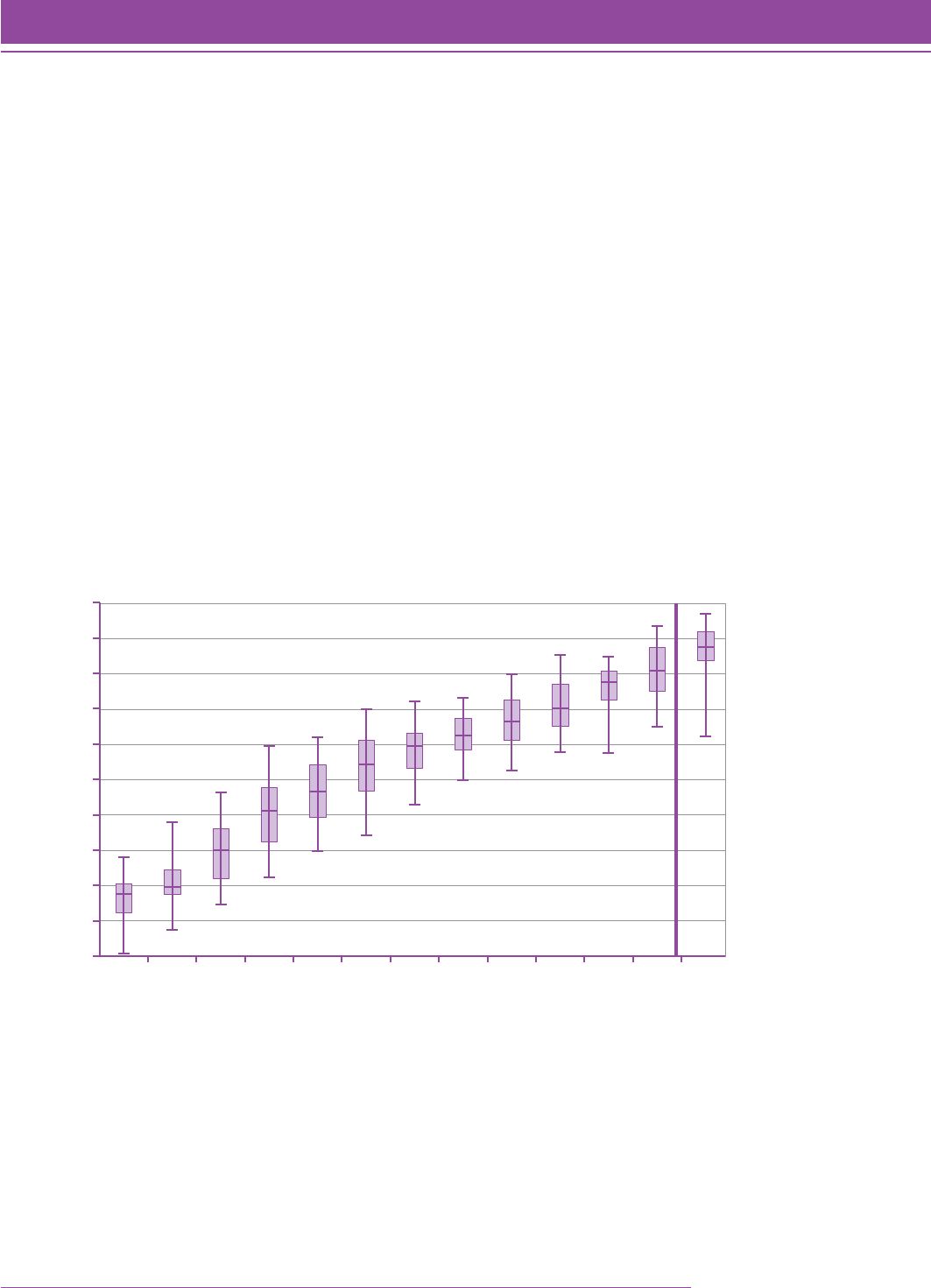

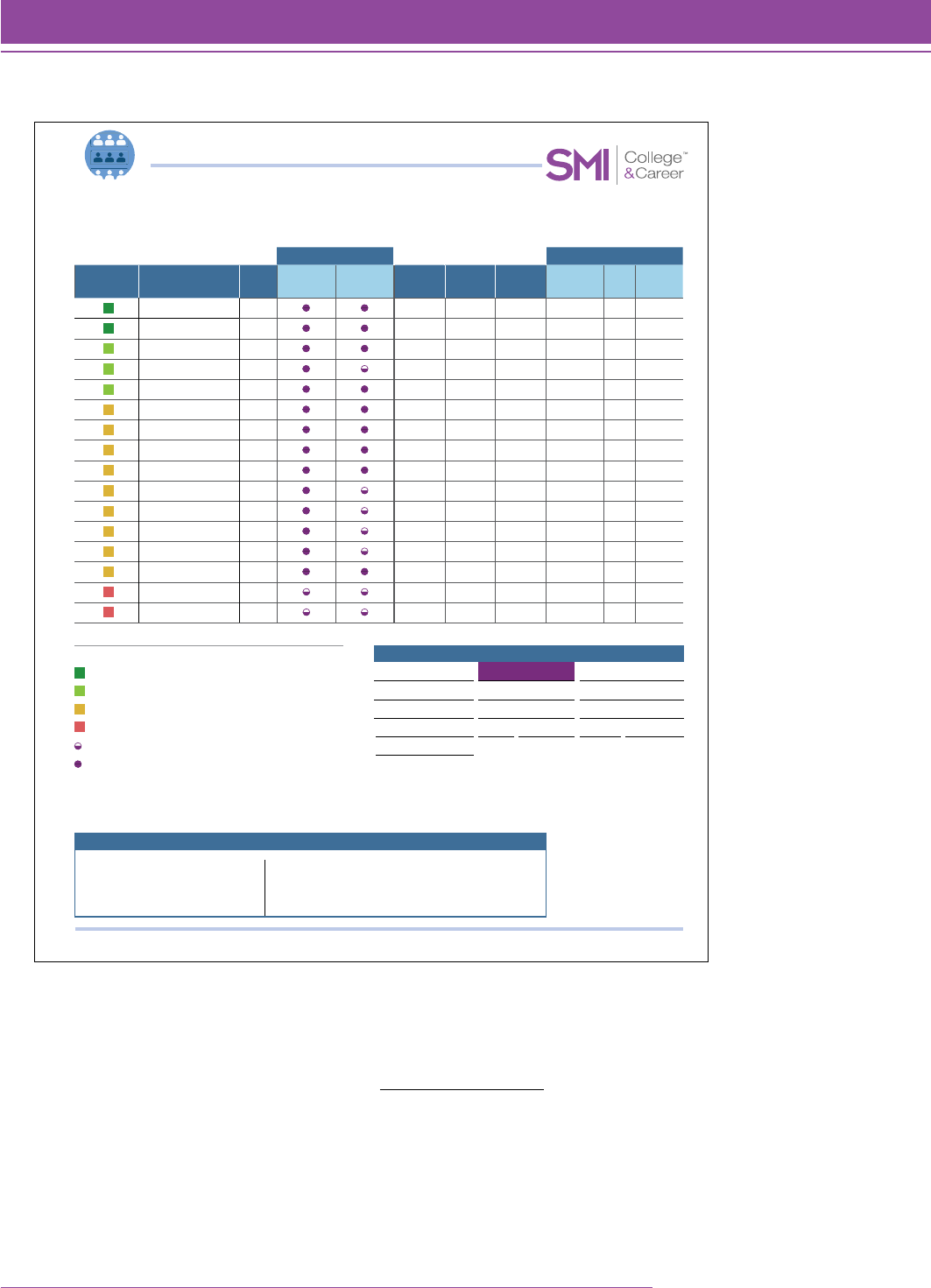

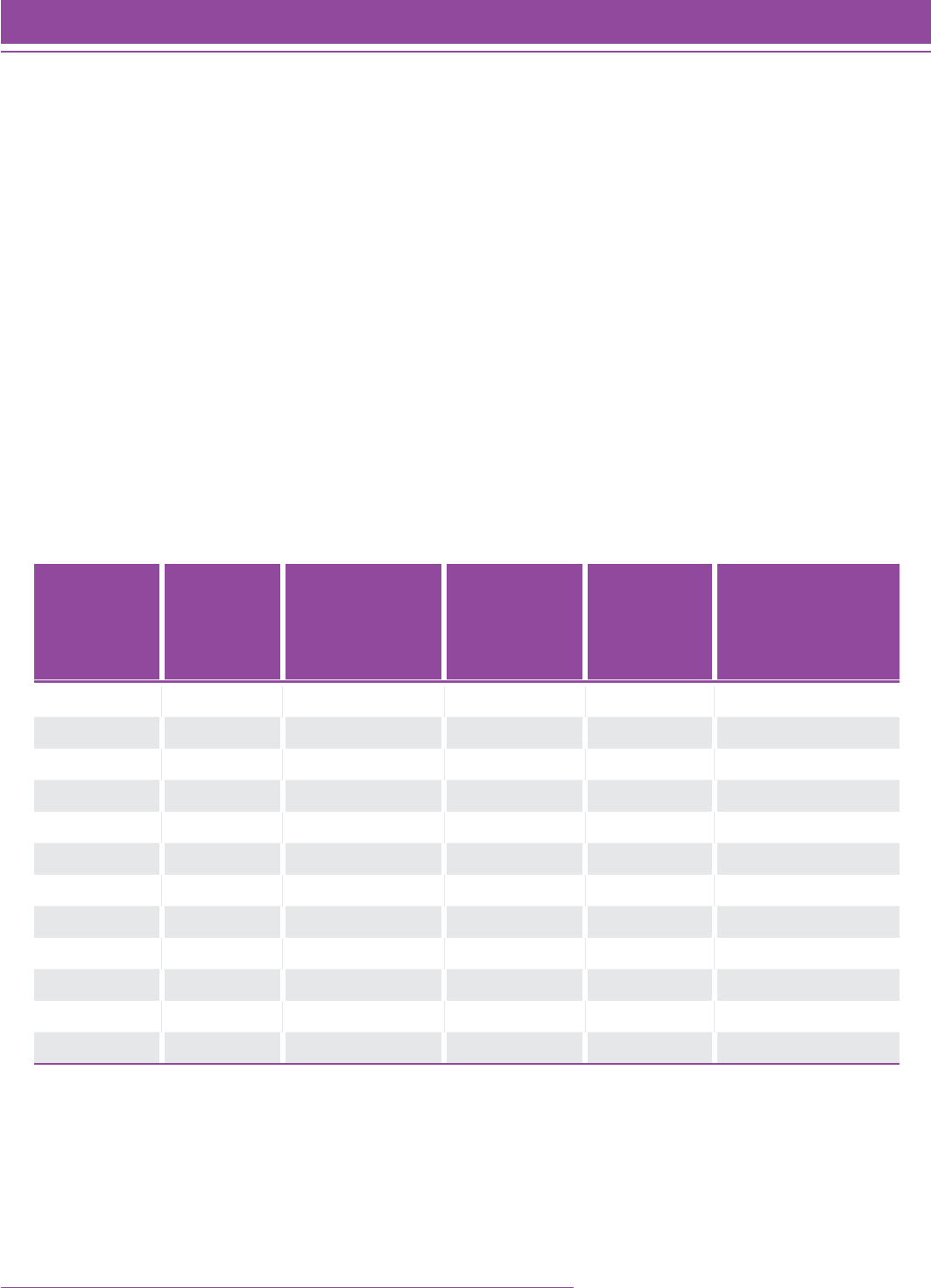

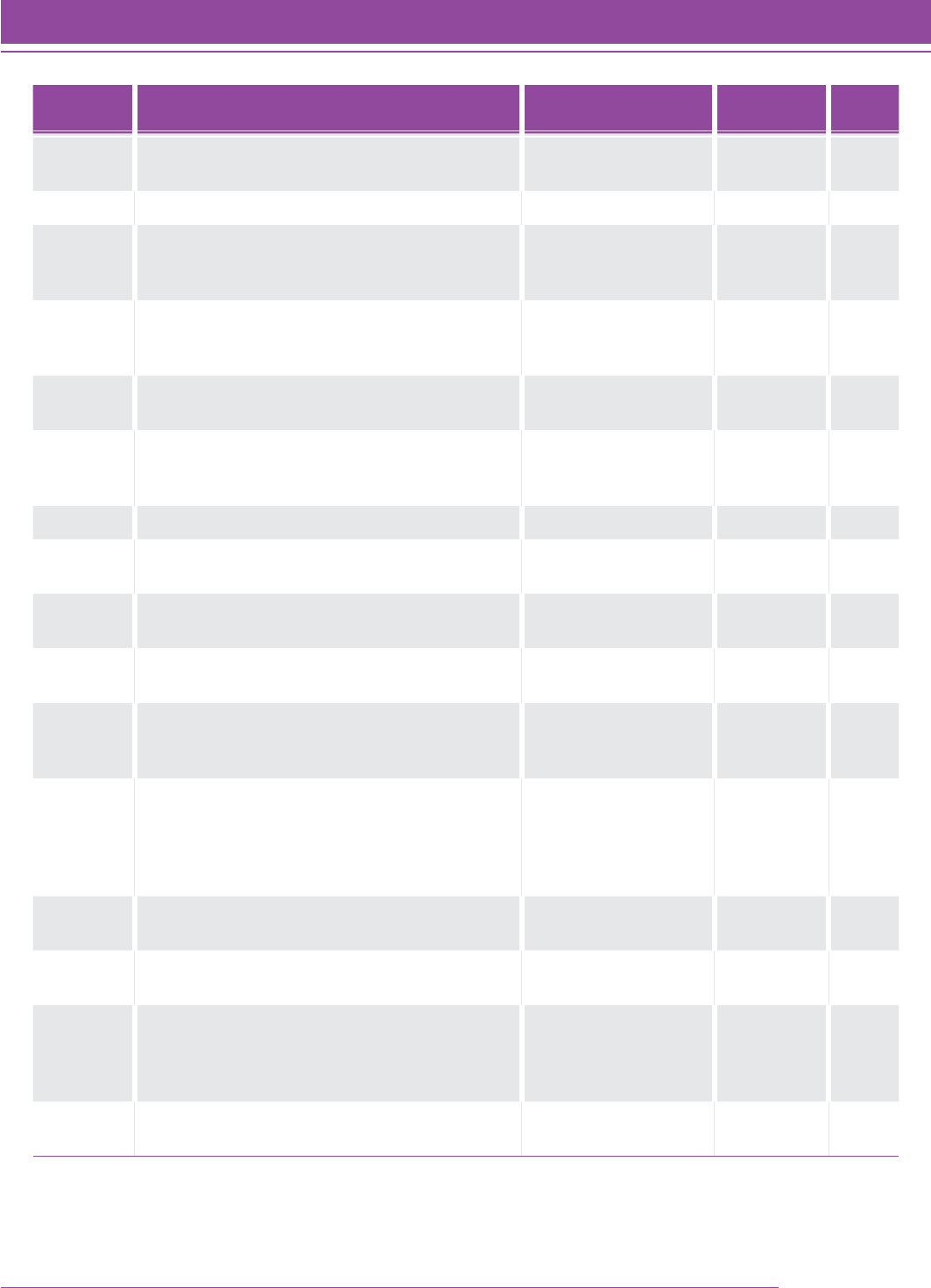

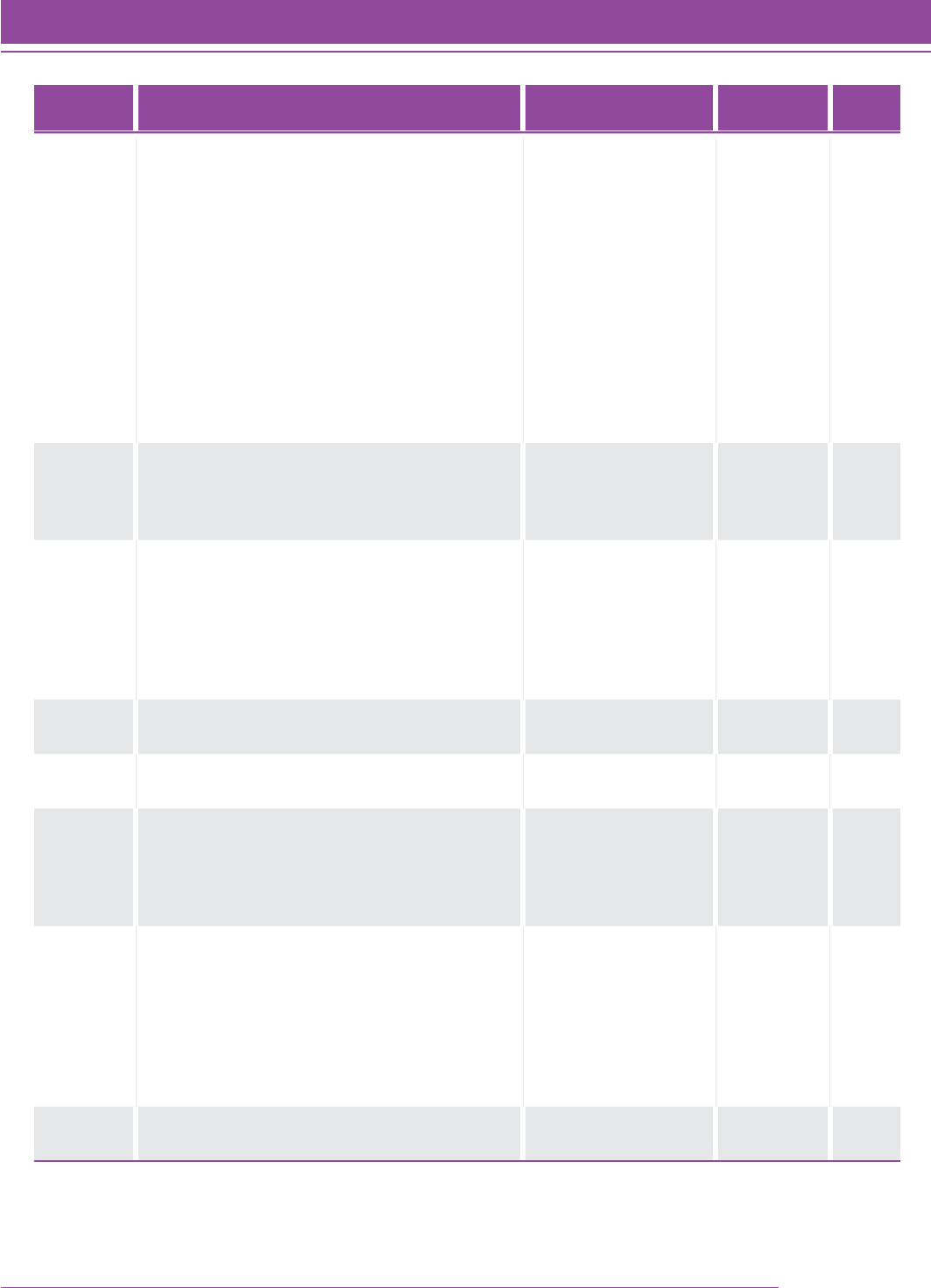

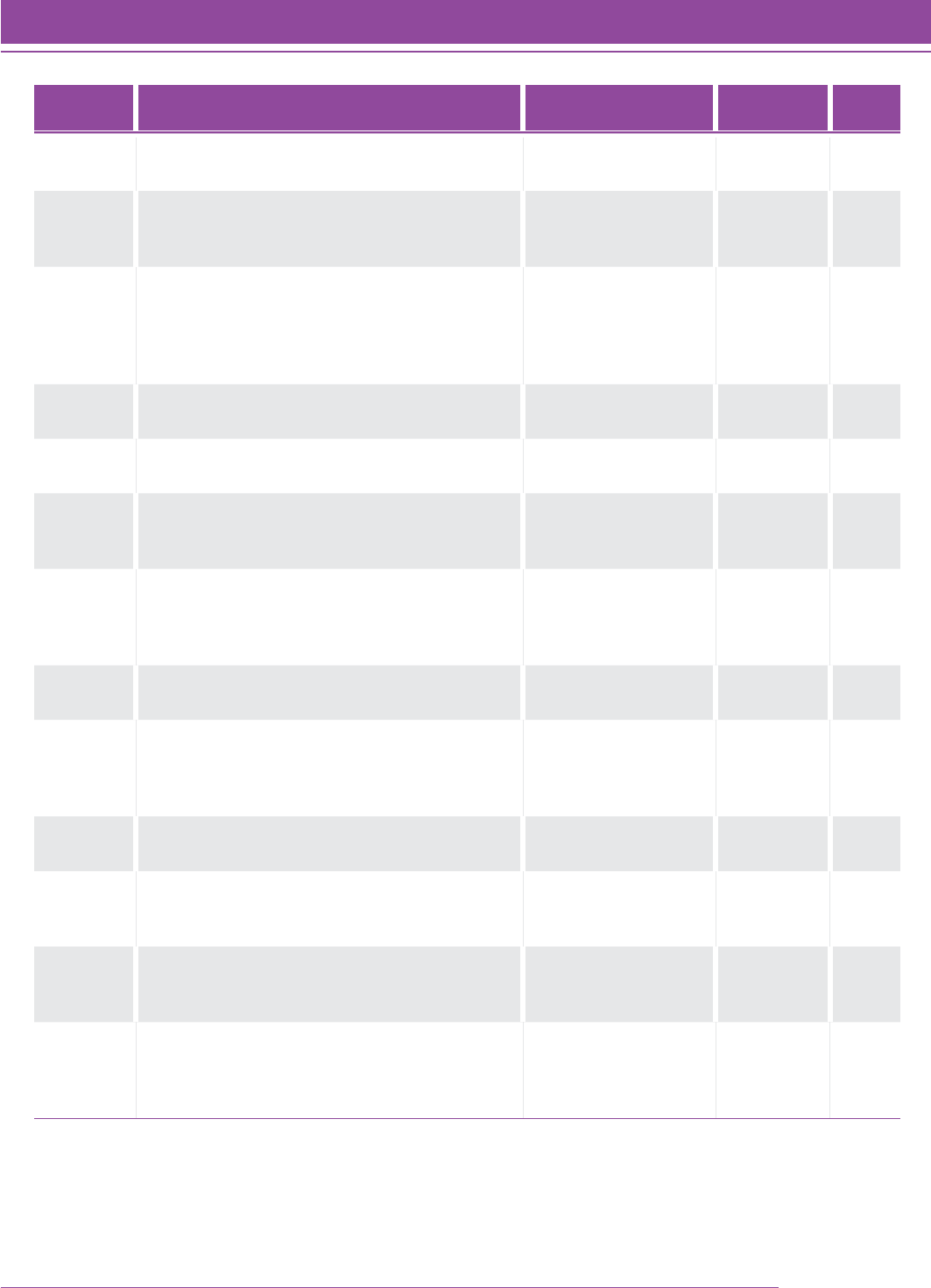

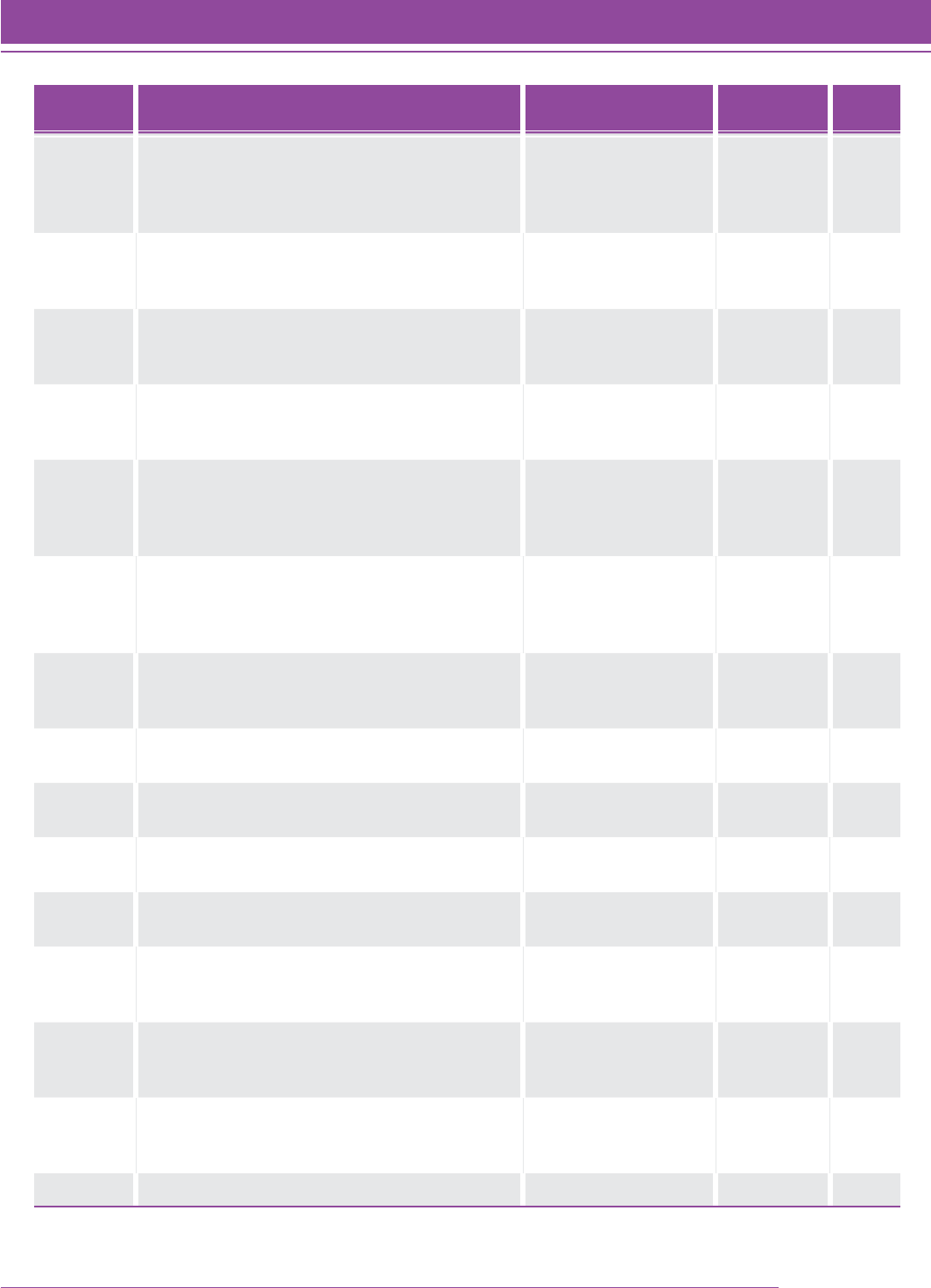

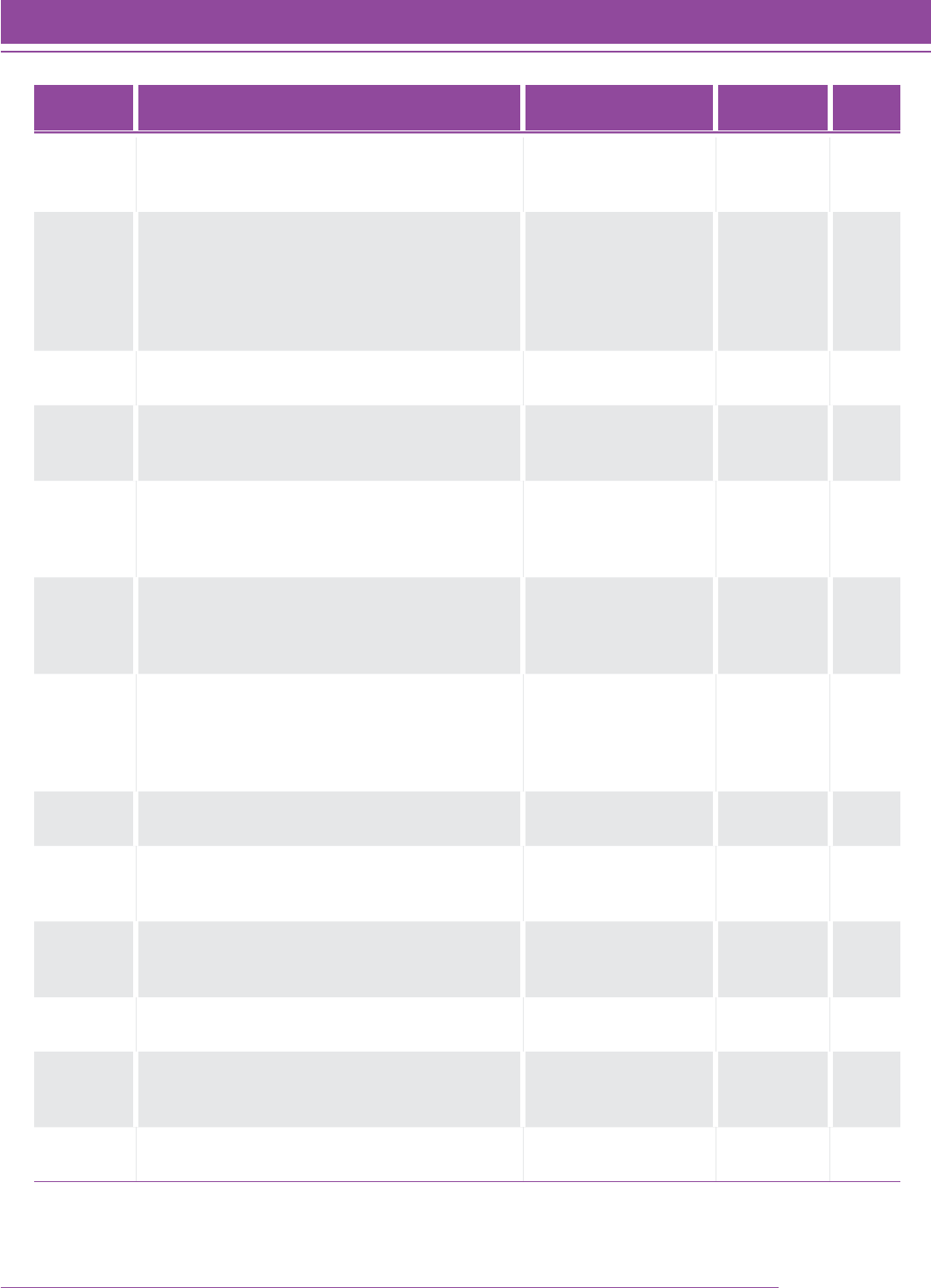

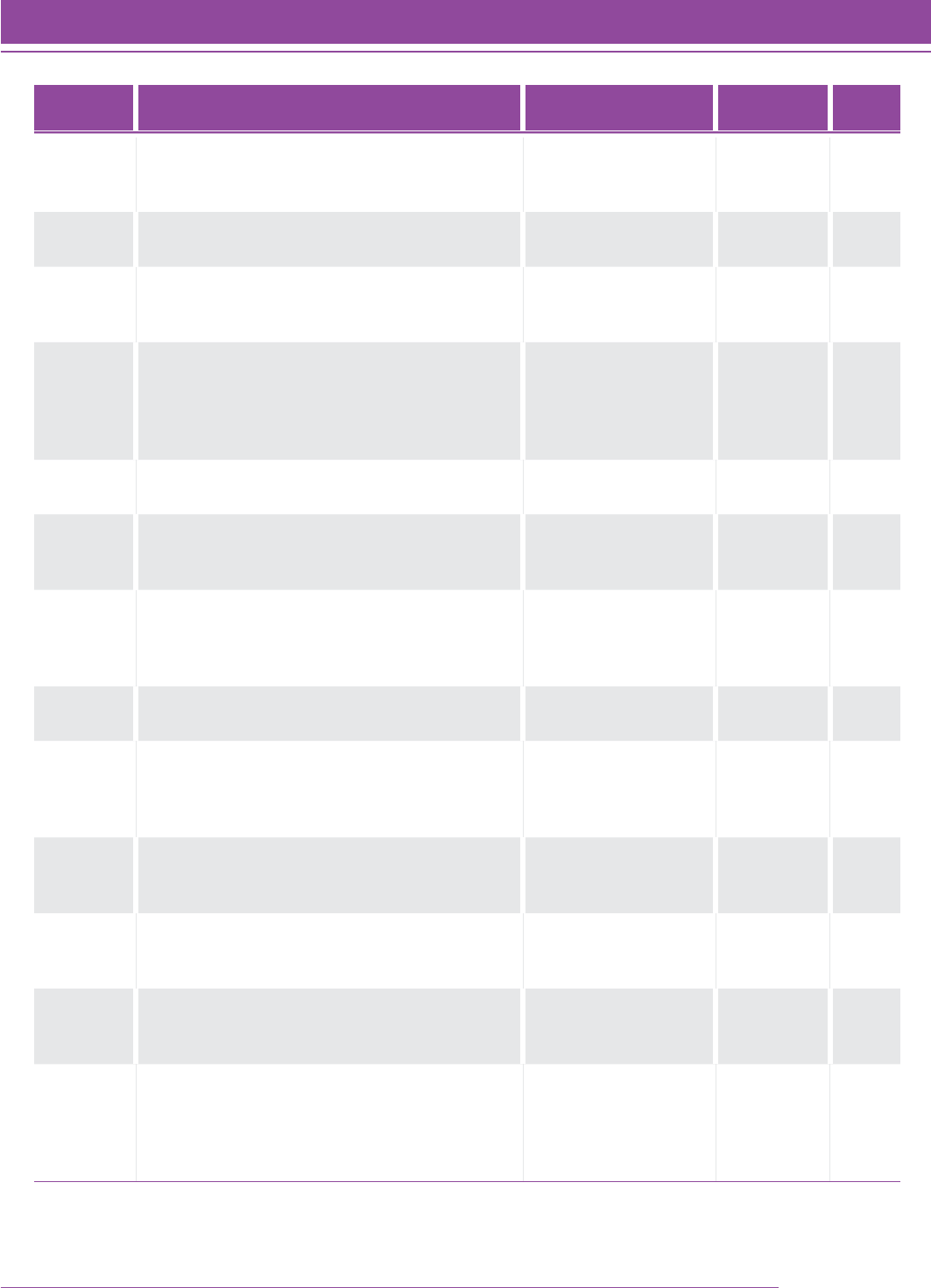

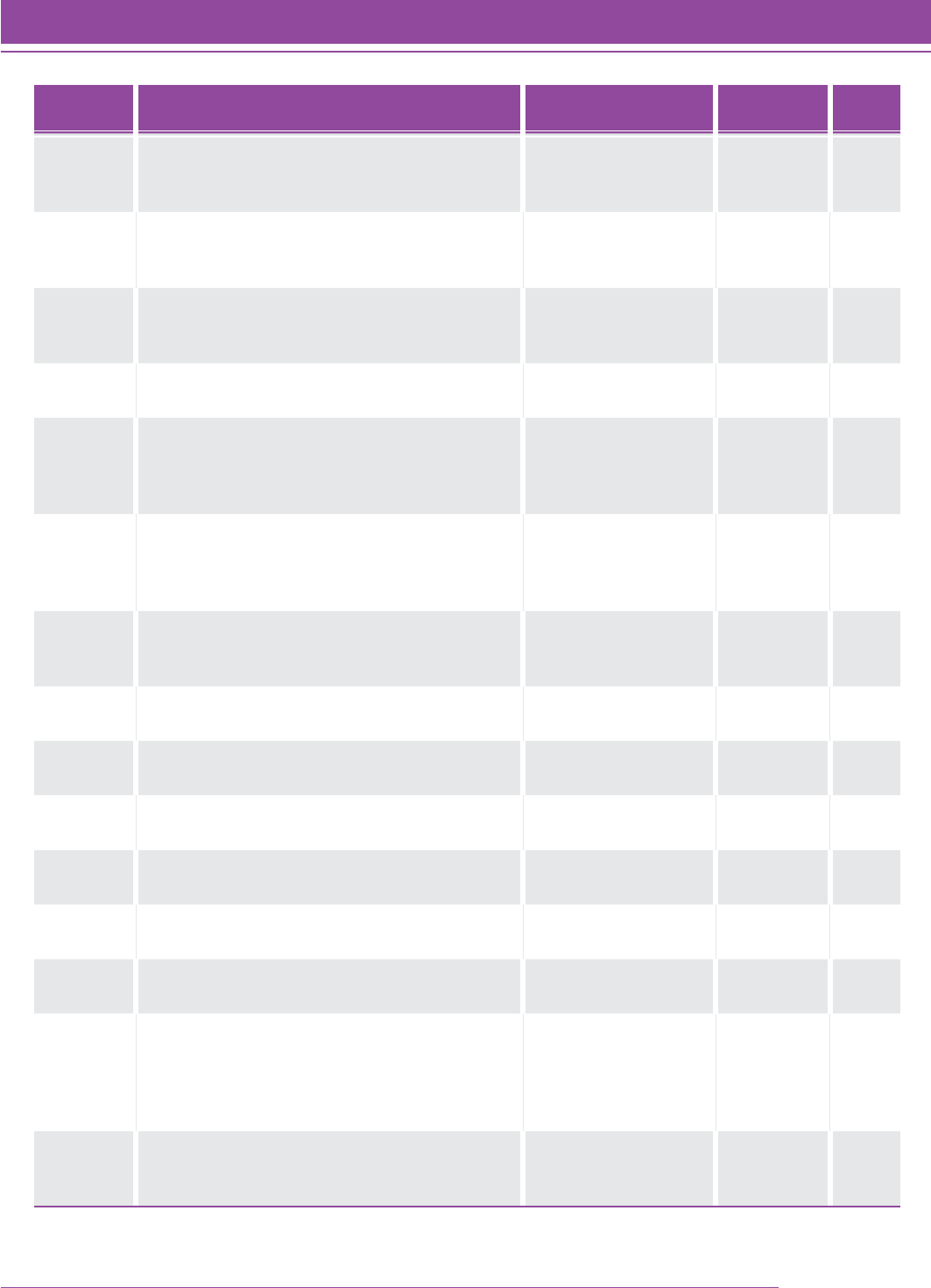

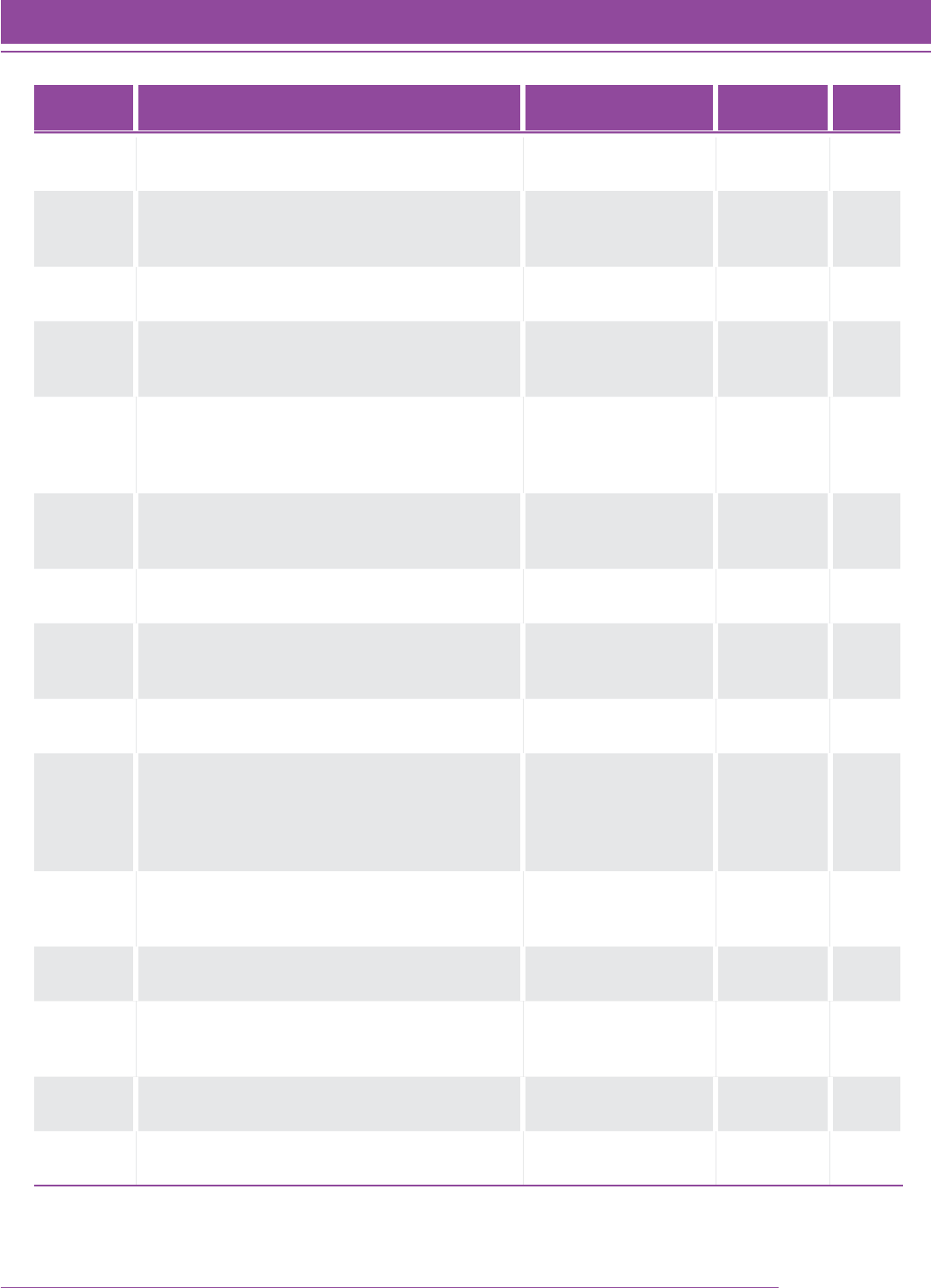

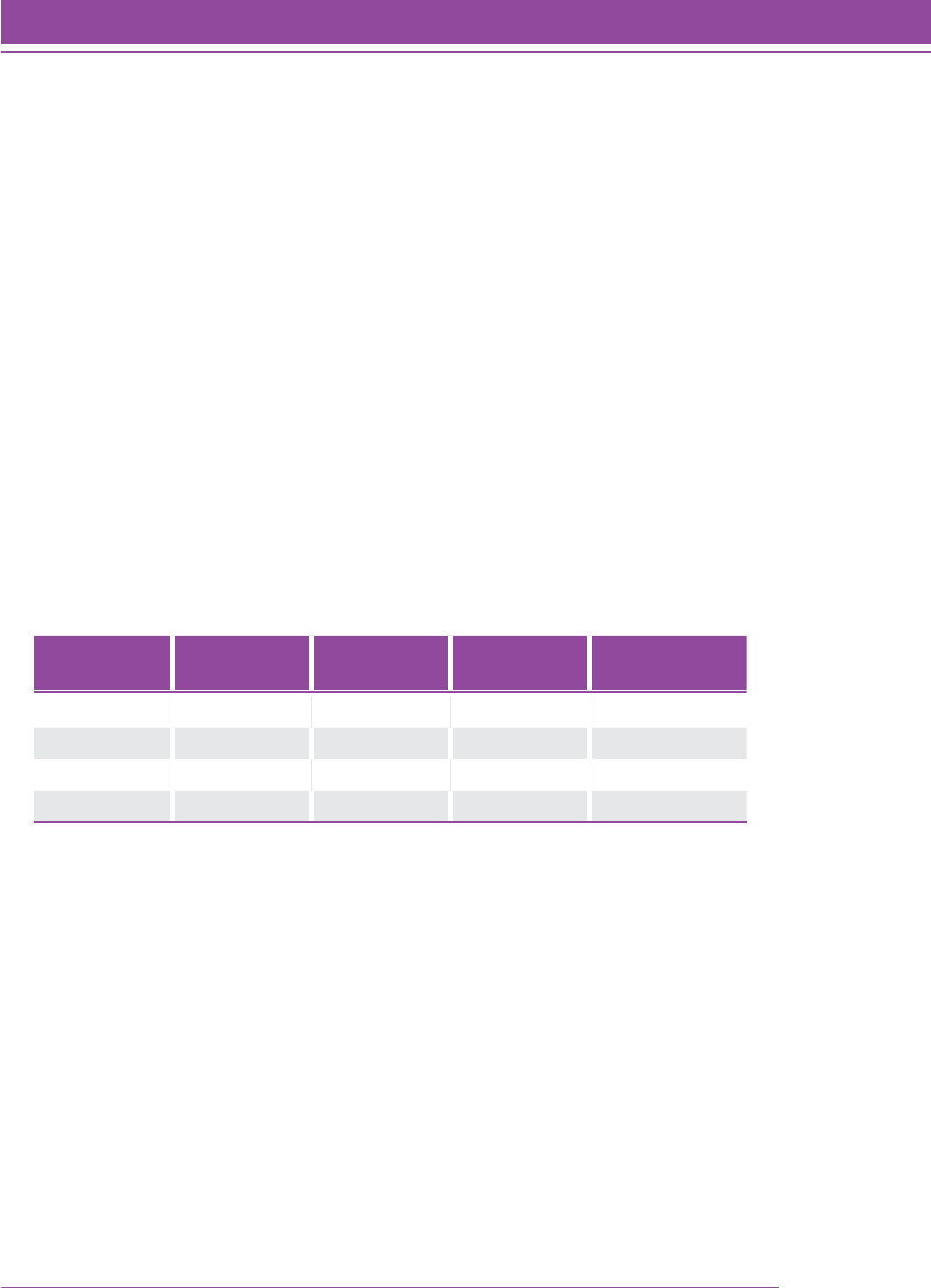

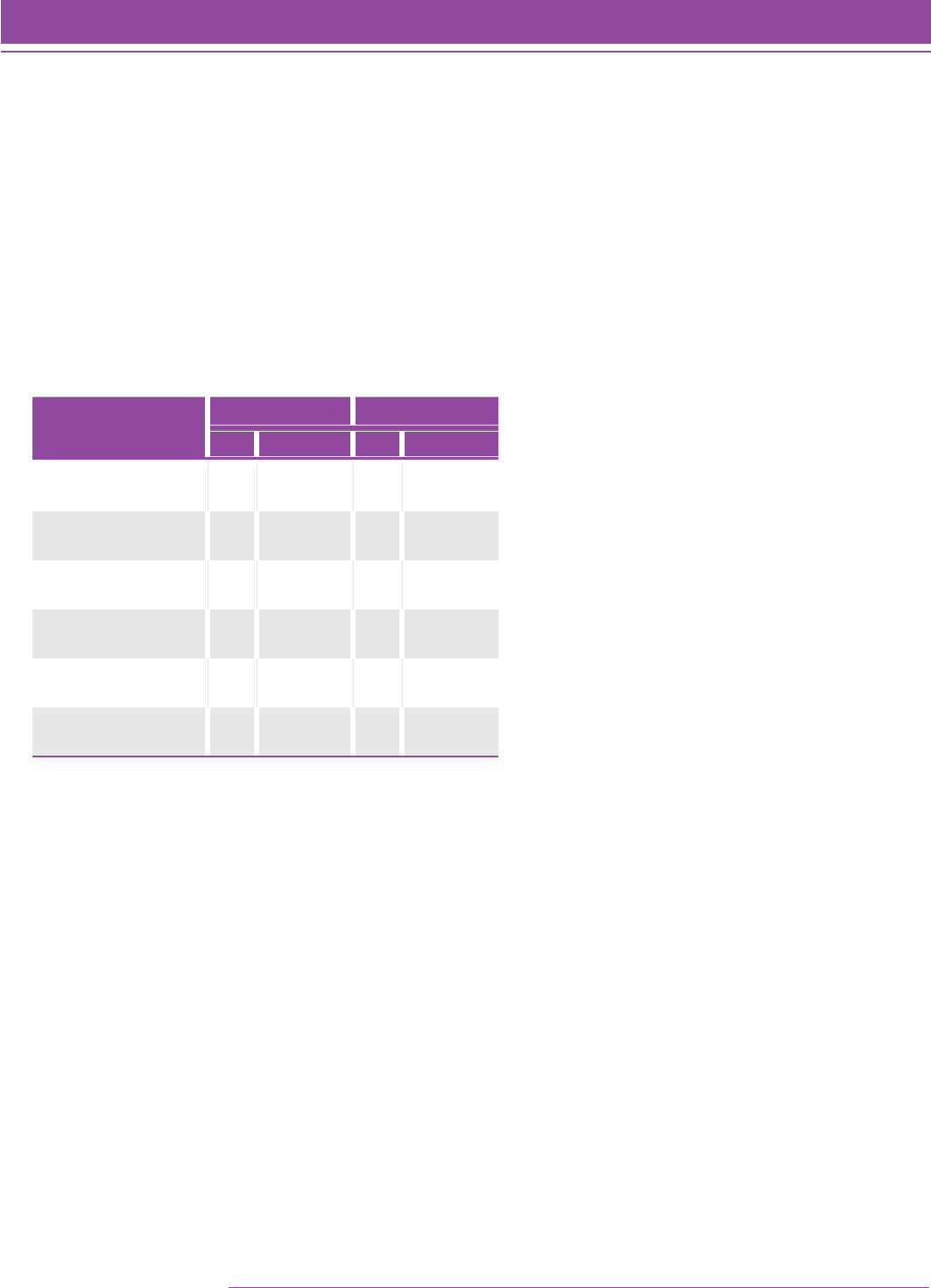

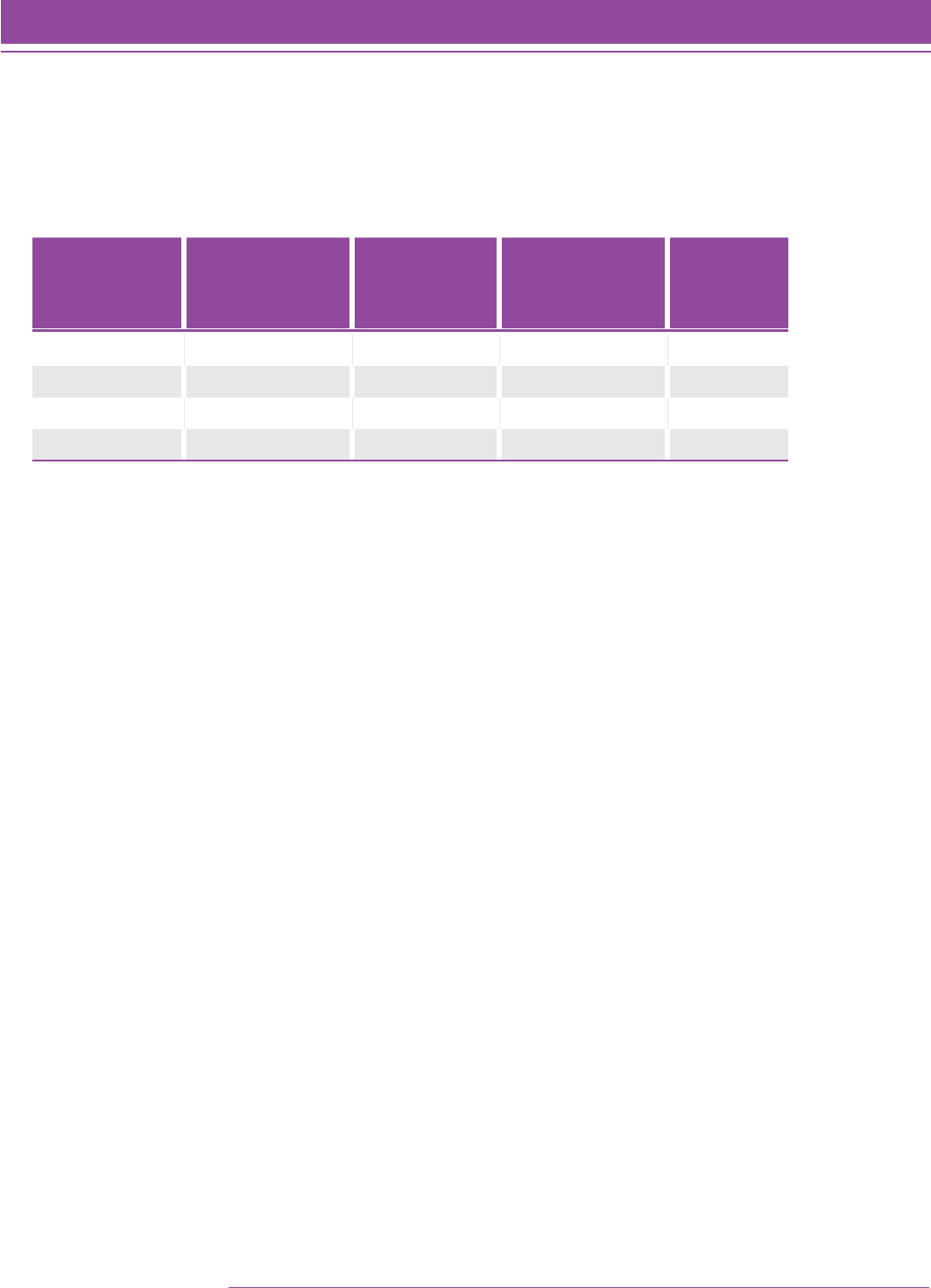

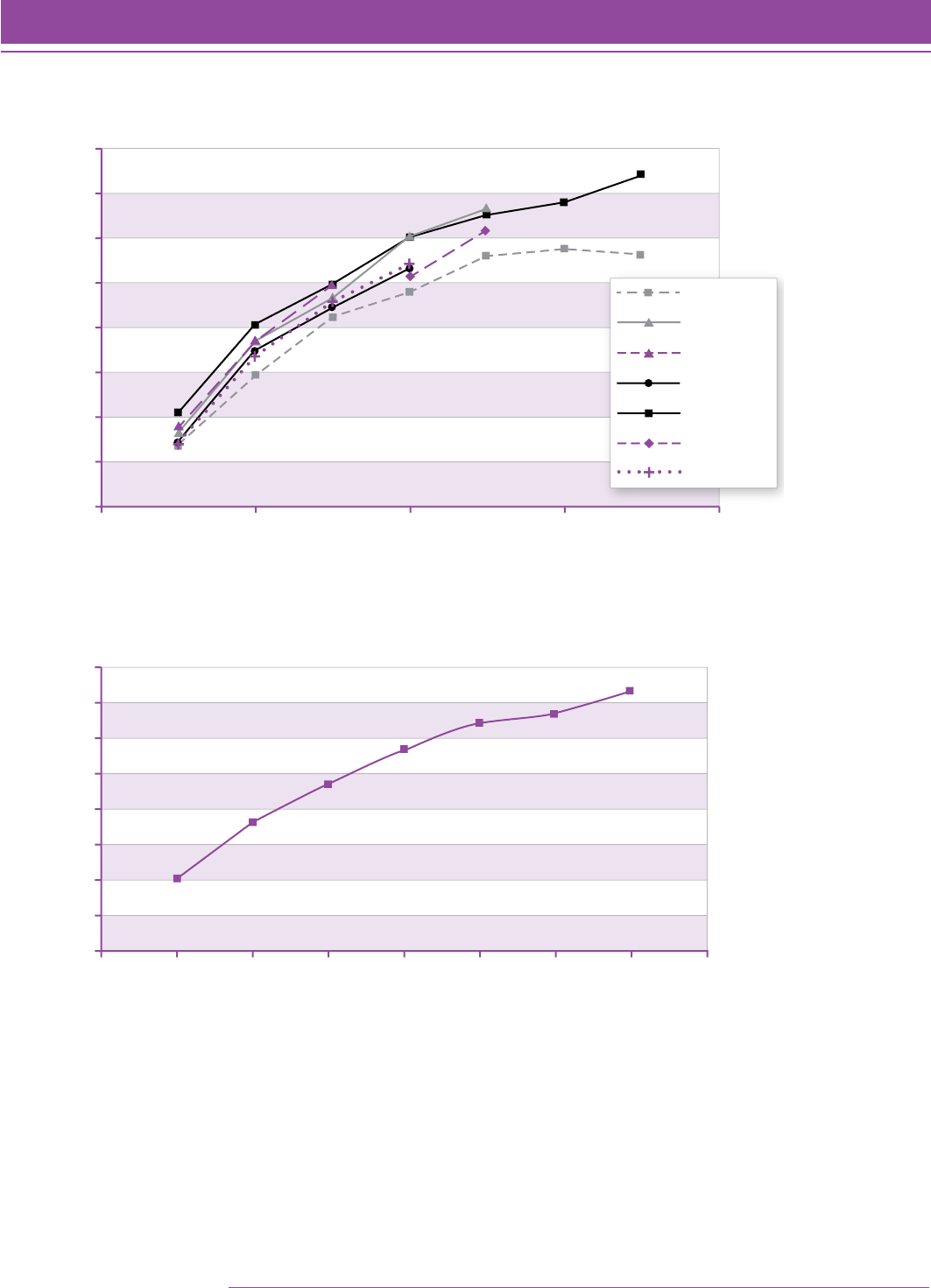

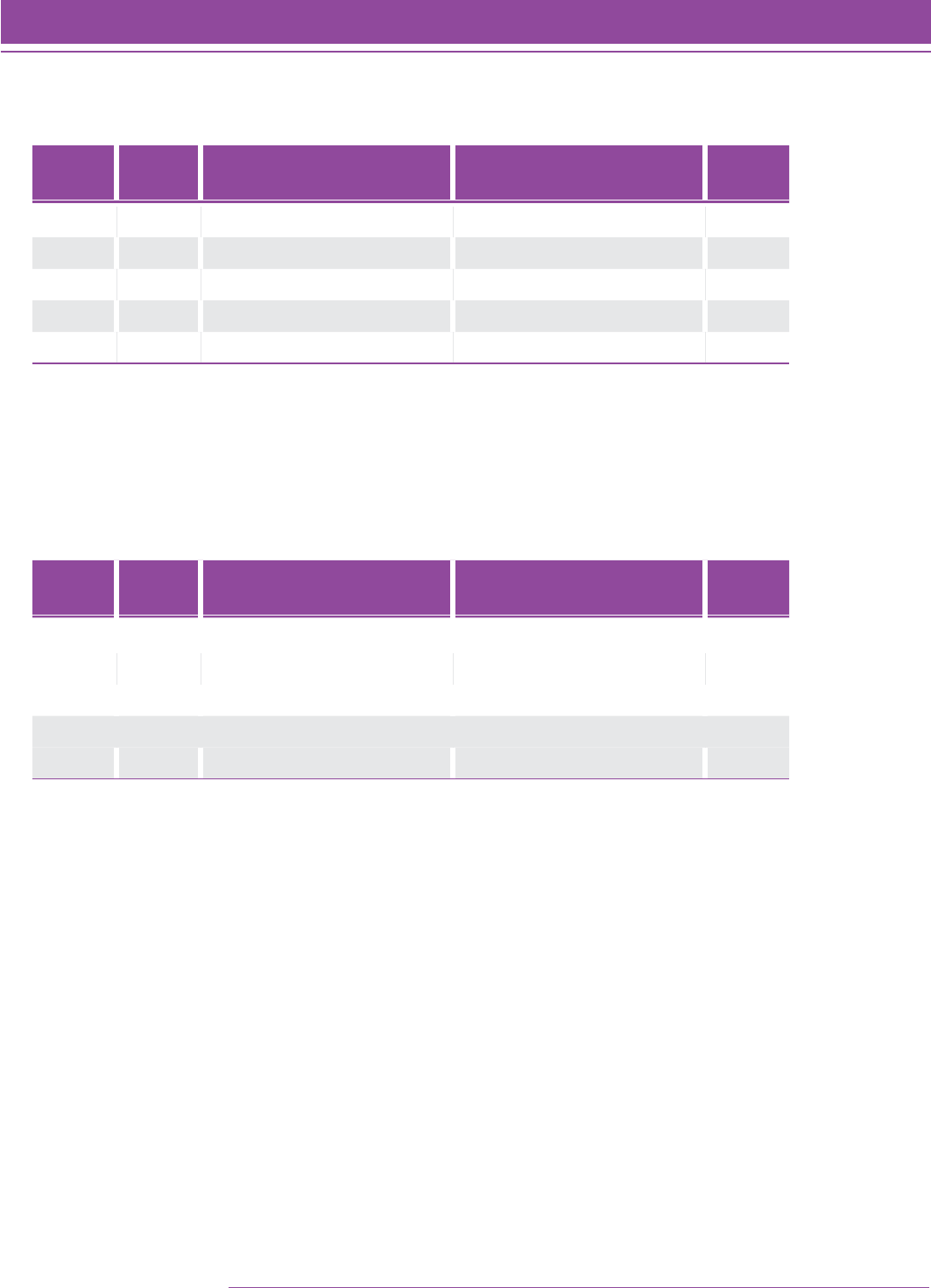

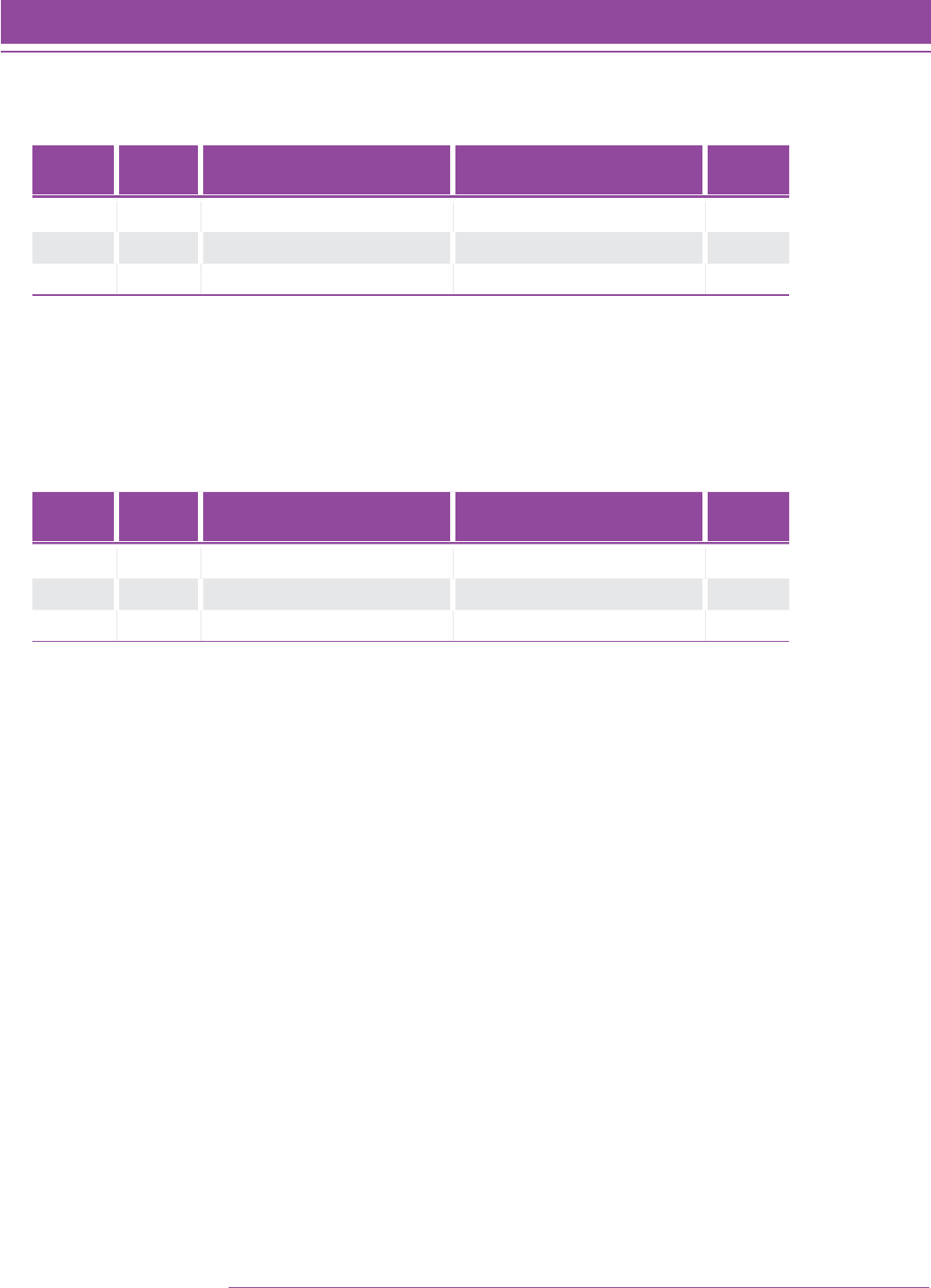

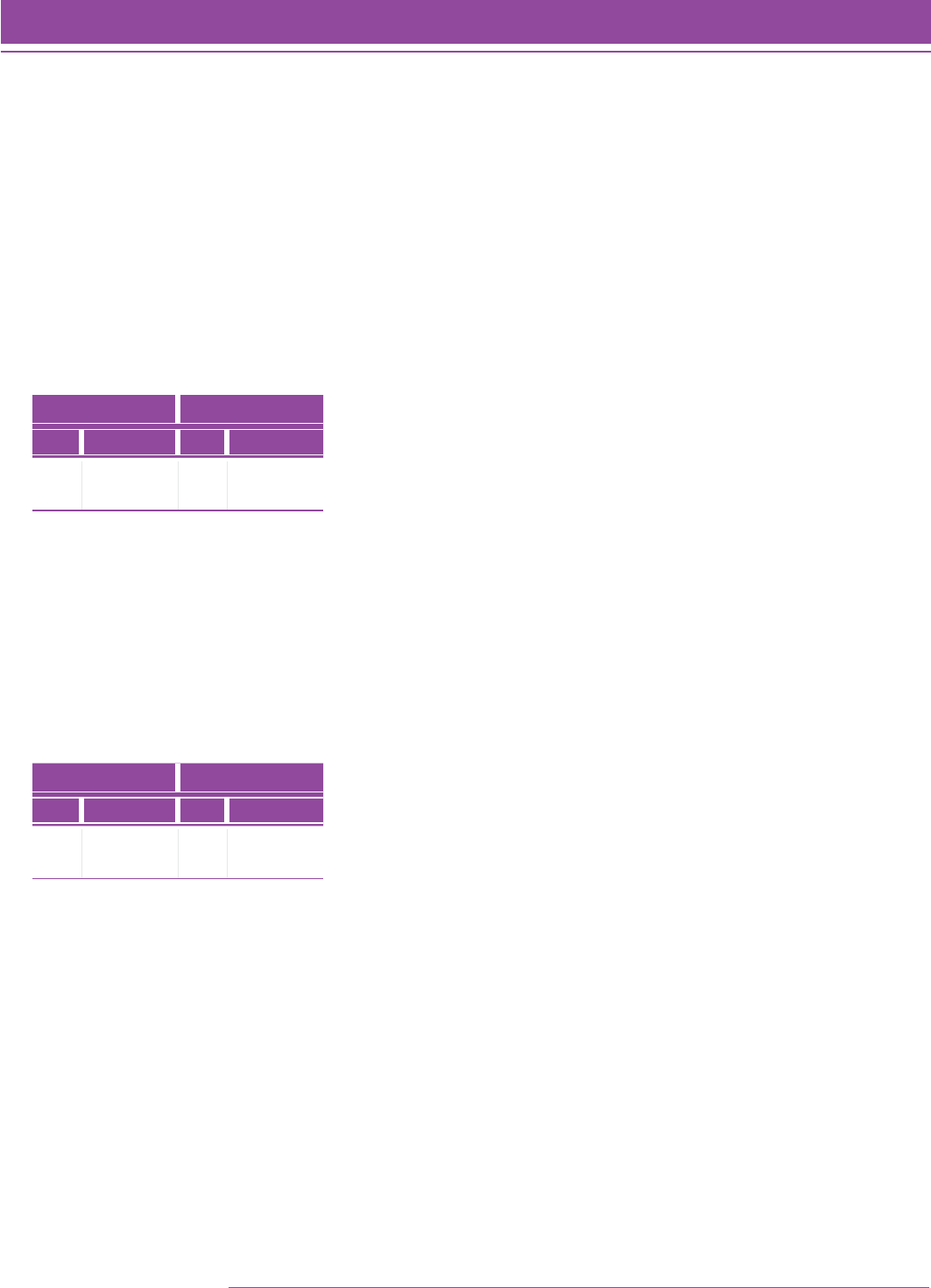

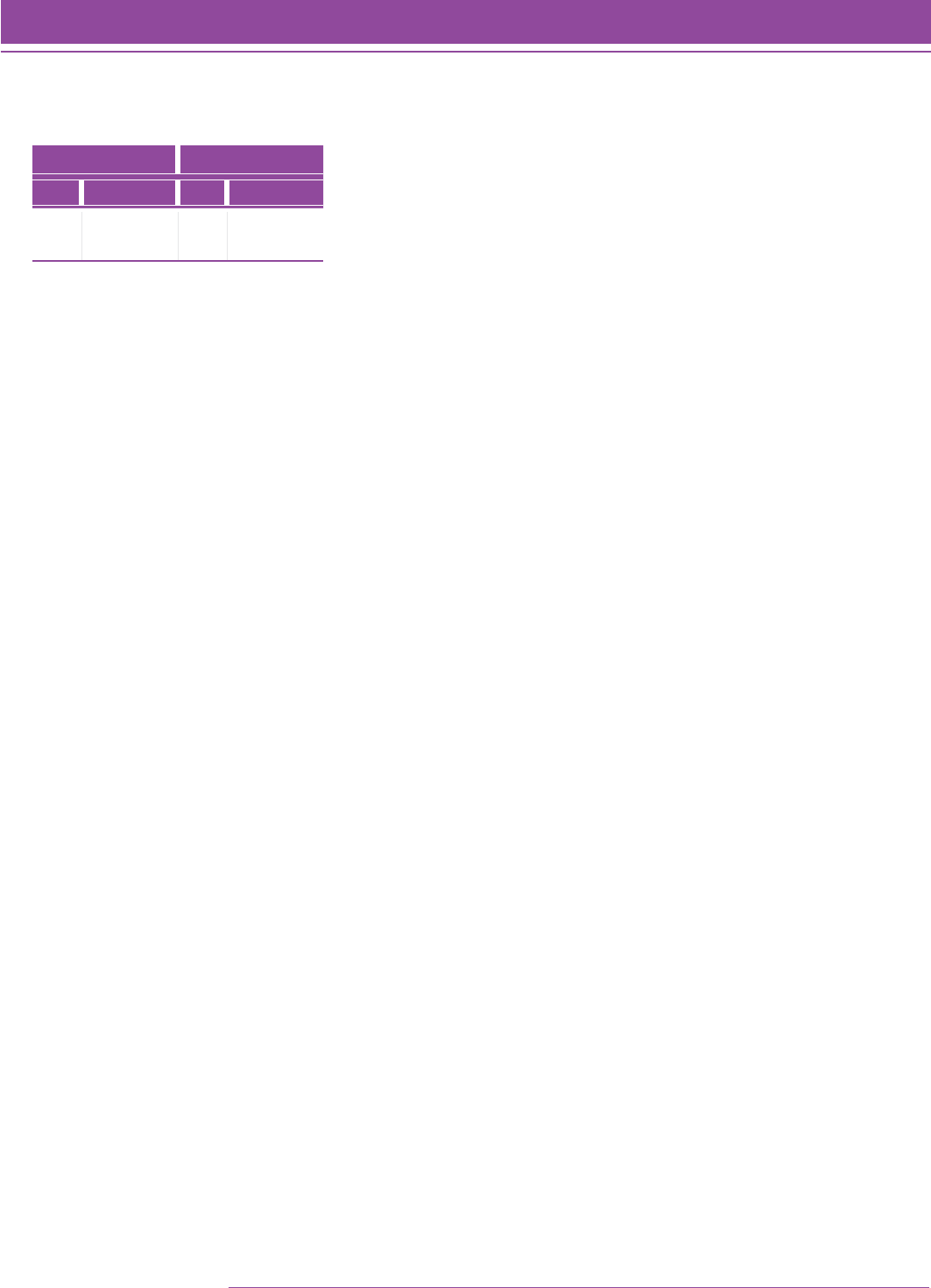

An SMI College & Career test reports students’ Quantile measures. In the SMI College & Career reports, student test

results are aligned with specific skills and concepts that are appropriate for instruction. For example, Figure 1 depicts

a report that specifies growth in the skills and concepts that a student is ready to learn and links those concepts to the

Common Core State Standards identification number and other Quantile-based instructional information.

FIGURE 1. Growth Report.

Printed by: Teacher

TM ® & © Scholastic Inc. All rights reserved.

GROWTH

Page 1 of 1

Printed on: 2/22/2015

CLASS: 3rd Period

School: Lincoln Middle School

Teacher: Sarah Foster

Grade: 5

Time Period: 12/13/14–02/22/15

Growth Report

FIRST TEST LAST TEST

STUDENT GRADE DATE

QuAnTilE®

MEAsurE/

PErforMAncE

lEvEl

DATE

QuAnTilE®

MEAsurE/

PErforMAncE

lEvEl

GROWTH IN QUANTILE® MEASURE

Gainer, Jacquelyn 5 12/13/14 925Q P02/22/15 1100Q A 175Q

Hartsock, Shalanda 5 12/13/14 595Q B02/22/15 750Q B 155Q

Cho, Henry 5 12/13/14 955Q P02/22/15 1100Q A 155Q

Cooper, Maya 5 12/13/14 700Q B02/22/15 820Q P 120Q

Robinson, Tiffany 5 12/13/14 390Q BB u 02/22/15 485Q BB 95Q

Cocanower, Jaime 5 12/13/14 640Q B02/22/15 710Q B 70Q

Garcia, Matt 5 12/13/14 615Q B02/22/15 680Q B 65Q

Terrell, Walt 5 12/13/14 670Q B02/22/15 720Q B 50Q

Enoki, Jeanette 5u 12/13/14 750Q P02/22/15 800Q B 50Q

Collins, Chris 5u 12/13/14 855Q Pu 02/22/15 890Q P 35Q

Morris, Timothy 5 12/13/14 620Q B02/22/15 650Q B 30Q

Ramirez, Jeremy 5u 12/13/14 580Q B 02/22/15 600Q B 20Q

KEY

USING THE DATA

Purpose:

This report shows changes in student

performance and growth on SMI over time.

Follow-Up:

Provide opportunities to challenge students who show

signicant growth. Provide targeted intervention and support

to students who show little growth.

YEAR-END PROFICIENCY RANGES

GRADE K 10Q–175Q GRADE 5 820Q–1020Q GRADE 9 1140Q–1325Q

GRADE 1 260Q–450Q GRADE 6 870Q–1125Q GRADE 10 1220Q–1375Q

GRADE 2 405Q–600Q GRADE 7 950Q–1175Q GRADE 11 1350Q–1425Q

GRADE 3 625Q–850Q GRADE 8 1030Q–1255Q GRADE 12 1390Q–1505Q

GRADE 4 715Q–950Q

EM Emerging Mathematician

ADVANCED

PROFICIENT

BASIC

BELOW BASIC

u Test taken in less than 15 minutes

A

P

B

BB

12 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Introduction

After an initial assessment with SMI College & Career, it is possible to monitor progress, predict the student’s

likelihood of success when instructed on mathematical skills and concepts, and report on actual student growth

toward the objective of algebra completion and, by extension, college and career readiness.

Students’ Quantile measures indicate their readiness for instruction on skills and concepts within a range of 50Q

above and below their Quantile measure. Students should be successful at independent practice with skills and

concepts that are about 150Q to 250Q below their Quantile measure. With SMI College & Career test results,

educators can choose materials and resources for targeted instruction and practice.

On a school-wide or instructional level, SMI College & Career results can be used to screen for intervention and

acceleration, measure progress at benchmarking intervals, group students for differentiated instruction, provide an

indication of outcomes on summative assessments, provide an independent measure of programmatic success, and

inform district decision making.

SMI supports school districts’ efforts to accelerate the learning path of struggling students. State educational

agencies (SEAs), local educational agencies (LEAs), and schools can use Title 1, Part A funds associated with

the American Recovery and Reinvestment Act of 2009 (ARRA) to identify, create, and structure opportunities and

strategies to strengthen education, drive school reform, and improve the academic achievement of at-risk students

using funds under Title I, Part A of the Elementary and Secondary Education Act of 1965 (ESEA) (US Department of

Education, 2009). Tiered intervention strategies can be used to provide support for students who are “at risk” of not

meeting state performance levels that define “proficient” achievement.

One such tiered approach is Response to Intervention (RTI), which involves providing the most appropriate

instruction, services, and scientifically based interventions to struggling students—with increasing intensity at each

tier of instruction (Cortiella, 2005).

As an academic assessment that can be used as a universal screener of all students, SMI College & Career can

also be used to identify those students who are “at risk” and provide student grouping recommendations for

appropriate instruction. SMI College & Career can be administered three to five times per year to monitor students’

growth. Regular monitoring of students’ progress is critical in determining if a student should move from one tier of

intervention to another and to determine the effectiveness of the intervention.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Introduction 13

Introduction

Another instructional approach supported by SMI College & Career is differentiated instruction in the Tier I classroom

(Tomlinson, 2001). By providing direct instructional recommendations for each student or each group of students and

linking those recommendations to the skills and concepts of the Quantile Framework, SMI College & Career provides

data to target and pace instruction.

Limitations of Scholastic Math Inventory College & Career

SMI College & Career utilizes an algorithm to ensure that each assessment is targeted to measure the readiness for

instruction of each student. Teachers and administrators can use the results to identify the mathematic skills and

concepts that are most appropriate for their students.

However, as with any assessment, SMI College & Career is one source of evidence about a student’s mathematical

understandings. Obviously, impactful decisions are best made when using multiple sources of evidence. Other

sources include student work such as homework and unit test results, state test data, adherence to mathematics

curriculum and pacing guides, student motivation, and teacher judgment.

One measure of student performance, taken on one day, is never sufficient to make high-stakes, student-

specific decisions such as summer school placement or retention.

The Quantile Framework for Mathematics Taxonomy ............................. 17

The Quantile Framework Field Study................................................. 22

The Quantile Scale...................................................................... 31

Validity of the Quantile Framework for Mathematics .............................. 36

Relationship of Quantile Framework to Other Measures of Mathematics

Understanding........................................................................... 37

Theoretical Foundation

and Validity of the Quantile

Framework for Mathematics

16 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation

Theoretical Foundation and Validity of the Quantile

Framework for Mathematics

The Quantile Framework is the backbone on which the mathematical skills and concepts assessed in SMI College

& Career are mapped. The Quantile Framework is a scale that describes a student’s mathematical achievement.

Similar to how degrees on a thermometer measure temperature, the Quantile Framework uses a common metric—

the Quantile—to scientifically measure a student’s ability to reason mathematically, monitor a student’s readiness

for mathematics instruction, and locate a student on its taxonomy of mathematical skills, concepts, and applications.

The Quantile Framework uses this common metric to measure many different aspects of education in mathematics.

The same metric can be applied to measure the materials used in instruction, to calibrate the assessments used to

monitor instruction, and to interpret the results that are derived from the assessments. The result is an anchor to

which resources, concepts, skills, and assessments can be connected.

There are dozens of mathematics tests that measure a common construct and report results in proprietary, non-

exchangeable metrics. Not only are all of the tests using different units of measurement, but all use different scales

on which to make measurements. Consequently, it is difficult to connect the test results with materials used in the

classroom. The alignment of materials and linking of assessments with the Quantile Framework enables educators,

parents, and students to communicate and improve mathematics learning. The benefits of having a common metric

include being able to:

• Develop individual multiyear growth trajectories that denote a developmental continuum from the early

elementary level to Algebra II and Precalculus. The Quantile scale is vertically constructed, so the meaning

of a Quantile measure is the same regardless of grade level.

• Monitor and report student growth that meets the needs of state-initiated accountability systems

• Help classroom teachers make day-to-day instructional decisions that foster acceleration and growth

toward algebra readiness and through the next several years of secondary mathematics

To develop the Quantile Framework, the following preliminary tasks were undertaken:

• Building a structure of mathematical performance that spans the developmental continuum from

Kindergarten content through Geometry, Algebra II, and Precalculus content

• Developing a bank of items that had been field tested

• Developing the Quantile scale (multiplier and anchor point) based on the calibrations of the field-test

items

• Validating the measurement of mathematics achievement as defined by the Quantile Framework

Each of these tasks is described in the sections that follow. The implementation of the Quantile Framework in the

development of SMI College & Career, as well as the use of SMI College & Career and the interpretation of results, is

described in later sections of this guide.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation 17

Theoretical Foundation

The Quantile Framework for Mathematics Taxonomy

To develop a framework of mathematical performance, an initial structure needs to be established. The structure of

the Quantile Framework is organized around two guiding principles—(1) mathematics is a content area, and

(2) learning mathematics is developmental in nature.

The National Mathematics Advisory Panel report (2008, p. xix) recommended the following:

To prepare students for Algebra, the curriculum must simultaneously develop conceptual understanding,

computational fluency, and problem-solving skills . . . [t]hese capabilities are mutually supportive, each

facilitating learning of the others. Teachers should emphasize these interrelations; taken together, conceptual

understanding of mathematical operations, fluent execution of procedures, and fast access to number

combinations jointly support effective and efficient problem solving.

When developing the Quantile Framework, MetaMetrics recognized that in order to adequately address the

scope and complexity of mathematics, multiple proficiencies and competencies must be assessed. The Quantile

Framework is an effort to recognize and define a developmental context of mathematics instruction. This notion is

consistent with the National Council of Teachers of Mathematics’ (NCTM) conclusions about the importance of school

mathematics for college and career readiness presented in Administrator's Guide: Interpreting the Common Core

State Standards to Improve Mathematics Education, published in 2011.

Strands as Sub-domains of Mathematical Content

A strand is a major subdivision of mathematical content. The strands describe what students should know and be

able to do. The National Council of Teachers of Mathematics (NCTM) publication Principles and Standards for School

Mathematics (2000, hereafter NCTM Standards) outlined ten standards—five content standards and five process

standards. These content standards are Number and Operations, Algebra, Geometry, Measurement, and Data

Analysis and Probability. The process standards are Communications, Connections, Problem Solving, Reasoning, and

Representation.

As of March 2014, the Common Core State Standards for Mathematics (CCSS) have been adopted in 44 states,

the Department of Defense Education Activity, Washington DC, Guam, the Northern Mariana Islands, and the US

Virgin Islands. The CCSS identify critical areas of mathematics that students are expected to learn each year from

Kindergarten through Grade 8. The critical areas are divided into domains that differ at each grade level and include

Counting and Cardinality, Operations and Algebraic Thinking, Number and Operations in Base Ten, Number and

Operations—Fractions, Ratios and Proportional Relationships, the Number System, Expressions and Equations,

Functions, Measurement and Data, Statistics and Probability, and Geometry. The CCSS for Grades 9–12 are

organized by six conceptual categories: Number and Quantity, Algebra, Functions, Modeling, Geometry, and Statistics

and Probability (NGA Center & CCSSO, 2010a).

The six strands of the Quantile Framework bridge the Content Standards of the NCTM Standards and the domains

specified in the CCSS.

1. Number Sense. Students with number sense are able to understand a number as a specific amount,

a product of factors, and the sum of place values in expanded form. These students have an in-depth

understanding of the base-ten system and understand the different representations of numbers.

2. Numerical Operations. Students perform operations using strategies and standard algorithms on different

types of numbers but also use estimation to simplify computation and to determine how reasonable their

results are. This strand also encompasses computational fluency.

18 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation

3. Geometry. The characteristics, properties, and comparison of shapes and structures are covered by

geometry, including the composition and decomposition of shapes. Not only does geometry cover abstract

shapes and concepts, but it provides a structure that can be used to observe the world.

4. Algebra and Algebraic Thinking. The use of symbols and variables to describe the relationships between

different quantities is covered by algebra. By representing unknowns and understanding the meaning

of equality, students develop the ability to use algebraic thinking to make generalizations. Algebraic

representations can also allow the modeling of an evolving relationship between two or more variables.

5. Data Analysis and Probability. The gathering of data and interpretation of data are included in data

analysis, probability, and statistics. The ability to apply knowledge gathered using mathematical methods to

draw logical conclusions is an essential skill addressed in this strand.

6. Measurement. The description of the characteristics of an object using numerical attributes is covered by

measurement. The strand includes using the concept of a unit to determine length, area, and volume in the

various systems of measurement, and the relationship between units of measurement within and between

these systems.

The Quantile Skill and Concept

Within the Quantile Framework, a Quantile Skill and Concept, or QSC, describes a specific mathematical skill or

concept a student can acquire. These QSCs are arranged in an orderly progression to create a taxonomy called the

Quantile scale. Examples of QSCs include:

1. Know and use addition and subtraction facts to 10 and understand the meaning of equality

2. Use addition and subtraction to find unknown measures of nonoverlapping angles

3. Determine the effects of changes in slope and/or intercepts on graphs and equations of lines

The QSCs used within the Quantile Framework were developed during Spring 2003, for Grades 1–8, Grade 9

(Algebra I), and Grade 10 (Geometry). The framework was extended to Algebra II and revised during Summer and Fall

2003. The content was finally extended to include material typically taught in Kindergarten and Grade 12

(Precalculus) during the Summer and Fall 2007.

The first step in developing a content taxonomy was to review the curricular frameworks from the following sources:

• NCTM Principles and Standards for School Mathematics (National Council of Teachers of Mathematics,

2000)

• Mathematics Framework for the 2005 National Assessment of Educational Progress: Prepublication Edition

(NAGB, 2005)

• North Carolina Standard Course of Study (Revised in 2003 for Kindergarten through Grade 12) (NCDPI, 1996)

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation 19

Theoretical Foundation

• California Mathematics Framework and state assessment blueprints: Mathematics Framework for California

Public Schools: Kindergarten Through Grade Twelve (2000 Revised Edition), Mathematics Content Standards

for California Public Schools: Kindergarten Through Grade Twelve (December 1997), blueprints document

for the Star Program California Standards Tests: Mathematics (California Department of Education, adopted

by SBE October 9, 2002), and sample items for the California Mathematics Standards Tests (California

Department of Education, January 2002).

• Florida Sunshine State Standards: Sunshine State Standards Grade Level Expectations for Mathematics,

Grade 2 through Grade 10. The Sunshine State Standards “are the centerpiece of a reform effort in Florida

to align curriculum, instruction, and assessment” (Florida Department of Education, 2007, p. 1).

• Illinois: The Illinois Learning Standards for Mathematics. Goals 6 through 10 emphasize the following:

Number and Operations, Measurement, Algebra, Geometry, and Data Analysis and Statistics—Mathematics

Performance Descriptors, Grades 1–5 and Grades 6–12 (2002).

• Texas Essential Knowledge and Skills: Texas Essential Knowledge and Skills for Mathematics (TEKS)

was adopted by the Texas State Board of Education and became effective on September 1, 1998. The

TEKS, a state-mandated curriculum, was “specifically designed to help students to make progress . . . by

emphasizing the knowledge and skills most critical for student learning” (TEA, 2002, p. 4).

The review of the content frameworks resulted in the development of a list of QSCs spanning mathematical

knowledge from Kindergarten through Grade 12 (college and career readiness or precalculus). Each QSC is aligned

with one of the six content strands. Currently, there are approximately 549 QSCs, which can be viewed and searched

at www.scholastic.com/SMI or www.Quantiles.com.

Each QSC consists of a description of the content, a unique identification number, the grade at which it typically first

appears, and the strand with which it is associated.

20 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation

Quantile Item Bank

The second task in the development of the Quantile Framework for Mathematics was to develop and field-test a

bank of items that could be used in future linking studies and calibration and development projects. Item bank

development for the Quantile Framework went through several stages—content specification, item writing and

review, field-testing and analyses, and final evaluation.

Content Specification

Each QSC developed during the design of the Quantile Framework was paired with a particular strand and identified

as typically being taught at a particular grade level. The curricular frameworks from Florida, North Carolina, Texas,

and California were synthesized to identify the appropriate grade level for each QSC. If a QSC was included in any of

these state frameworks, it was then added to the list of QSCs for the item bank utilized in the Quantile Framework

field study.

During Summer and Fall 2003, more than 1,400 items were developed to assess the QSCs associated with content

extending from first grade through Algebra II. The items were written and reviewed by mathematics educators

trained to develop multiple-choice items (Haladyna, 1994). Each item was associated with a strand and a QSC. In the

development of the Quantile Framework item bank, the reading demand of the items was kept as low as possible to

ensure that the items were testing mathematics achievement and not reading.

Item Writing and Review

Item writers were teachers of, and item-development specialists who had experience with, mathematics education

at various levels. Employing individuals with a range of experiences helped to ensure that the items were valid

measures. Item writers were provided with training materials concerning the development of multiple-choice

items and the Quantile Framework. Included in the item-writing materials were incorrect and ineffective items that

illustrated the criteria used to evaluate items, along with corrections based on those criteria. The final phase of item-

writer training was a short practice session with three items.

Item writers were given additional training related to sensitivity issues. Some item-writing materials address these

issues and identify areas to avoid when selecting passages and developing items. These materials were developed

based on work published concerning universal design and fair access, including the issues of equal treatment of the

sexes, fair representation of minority groups, and fair representation of disabled individuals.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation 21

Theoretical Foundation

A group of specialists representing various perspectives—test developers, editors, curriculum specialists, and

mathematics specialists—reviewed and edited the items. These individuals examined each item for sensitivity

issues and for the quality of the response options. During the second stage of the item review process, items were

approved, approved with edits, or deleted.

Field Testing and Analyses

The next stage in the development of the Quantile item bank was the field-testing of all of the items. First, individual

test items were compiled into leveled assessments distributed to groups of students. The data gathered from these

assessments were then analyzed using a variety of statistical methods. The final result was a bank of test items

appropriately placed within the Quantile scale, suitable for determining the mathematical achievement of students

on this scale.

Assessments for Field Testing

Assessment forms were developed for 10 levels for the purposes of field-testing. Levels 2 though 8 were aligned

with the typical content taught in Grades 2–8. Level 9 was aligned with the typical content taught in Algebra I. Level

10 was aligned with the typical content taught in Geometry. Finally, Level 11 was aligned with the typical content

taught in Algebra II. A total of 30 test forms were developed (three per assessment level), and each test form was

composed of 30 items.

Creating the taxonomy of QSCs across all grade levels involved linking the field test forms such that the concepts

and difficulty levels between tests overlapped. This was achieved by designating a linking set of items for each

grade level. These items were administered to the originally intended grade and were also placed on off-grade forms

(above or below one grade).

With the structure of the test forms established, the forms needed to be populated with the appropriate items. First,

a pool of items was formed from the items developed during the item-writing phase. The repository consisted of

66 items for each grade level, from Grade 2 to Algebra II (10 levels total). Of these, 54 items were designated on-

grade-level items and would only appear on test forms for that particular grade level. The remaining 12 items were

designated linking items and could appear on test forms one grade level above or below the level of the item.

The final field tests were composed of 686 unique items. Besides the 660 items mentioned above, two sets of 12

linking items were developed to serve as below-level items for Grade 2 and above-level items for Algebra II. Two

additional Algebra II items were developed to ensure coverage of all the QSCs at that level.

22 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation

The three test forms for each grade level were developed using a domain-sampling model in which items were

randomly assigned within the QSC to a test form. To achieve the goal of linking the test forms within a grade level,

as well as across grade levels, the linking items were utilized as follows: Each test form contained six items from the

linking set at the same grade level as the test form. For across-grade linking, four items were added to each field-

test form from the below-grade linking set, and two items were added to each field-test form from the above-grade

linking set. In conclusion, the linking items were used such that test items overlapped on two forms within the same

grade level and on two or more forms from different grade levels.

Linking the test levels vertically (across grades) employed a common-item test design (design in which items are

used on multiple forms). In this design, multiple tests are given to nonrandom groups, and a set of common items

is included in the test administration to allow some statistical adjustments for possible sample-selection bias. This

design is most advantageous where the number of items to be tested (treatments) is large and the consideration of

cost (in terms of time) forces the experiment to be smaller than is desired (Cochran & Cox, 1957).

The Quantile Framework Field Study

The Quantile Framework field study was conducted in February 2004. Thirty-seven schools from 14 districts across

six states (California, Indiana, Massachusetts, North Carolina, Utah, and Wisconsin) agreed to participate in the study.

Data were received from 34 of the schools (two elementary schools and one middle school did not return data). A

total of 9,847 students in Grades 2 through 12 were tested. The number of students tested per school ranged from

74 to 920. The schools were diverse in terms of geographic location, size, and type of community (e.g., suburban;

small town, small city, or rural communities; and urban). See Table 1 for information about the sample at each grade

level and the total sample. See Table 2 for test administration forms by level.

Rulers were provided to students; protractors were provided to students administered test levels 3–11. Formula

sheets were provided on the back of the test booklet for students administered levels 5–8, 10, and 11. The use of

calculators was permitted on the second part of each test. Students administered level 5 and below could use a

four-function calculator, and students administered level 6 and above could use a scientific calculator. Administration

time was about 45 minutes at each grade level. Students administered the level 2 test could have the test read

aloud, and mark in the test booklet, if that was the typical form of assessment in the classroom.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation 23

Theoretical Foundation

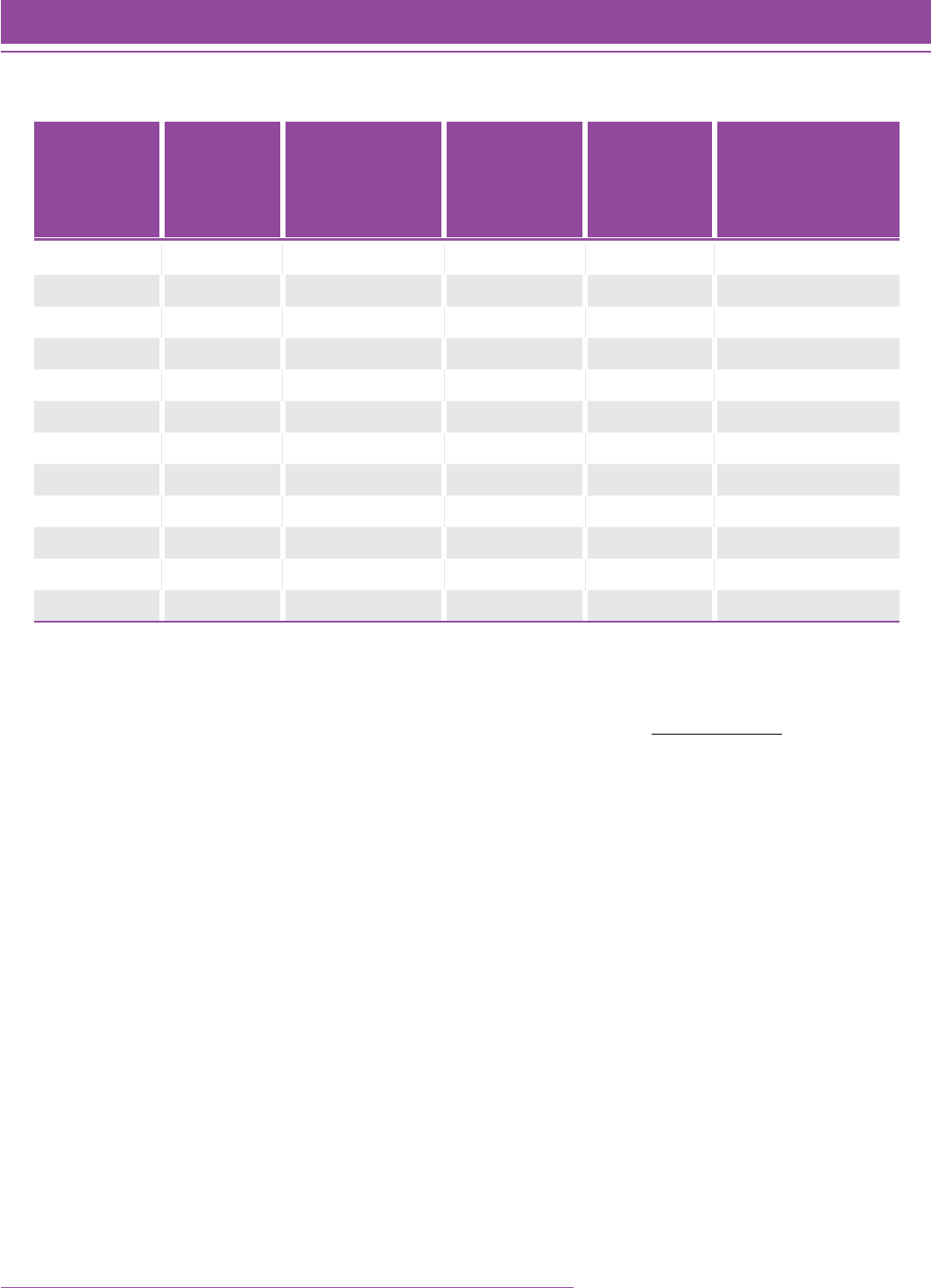

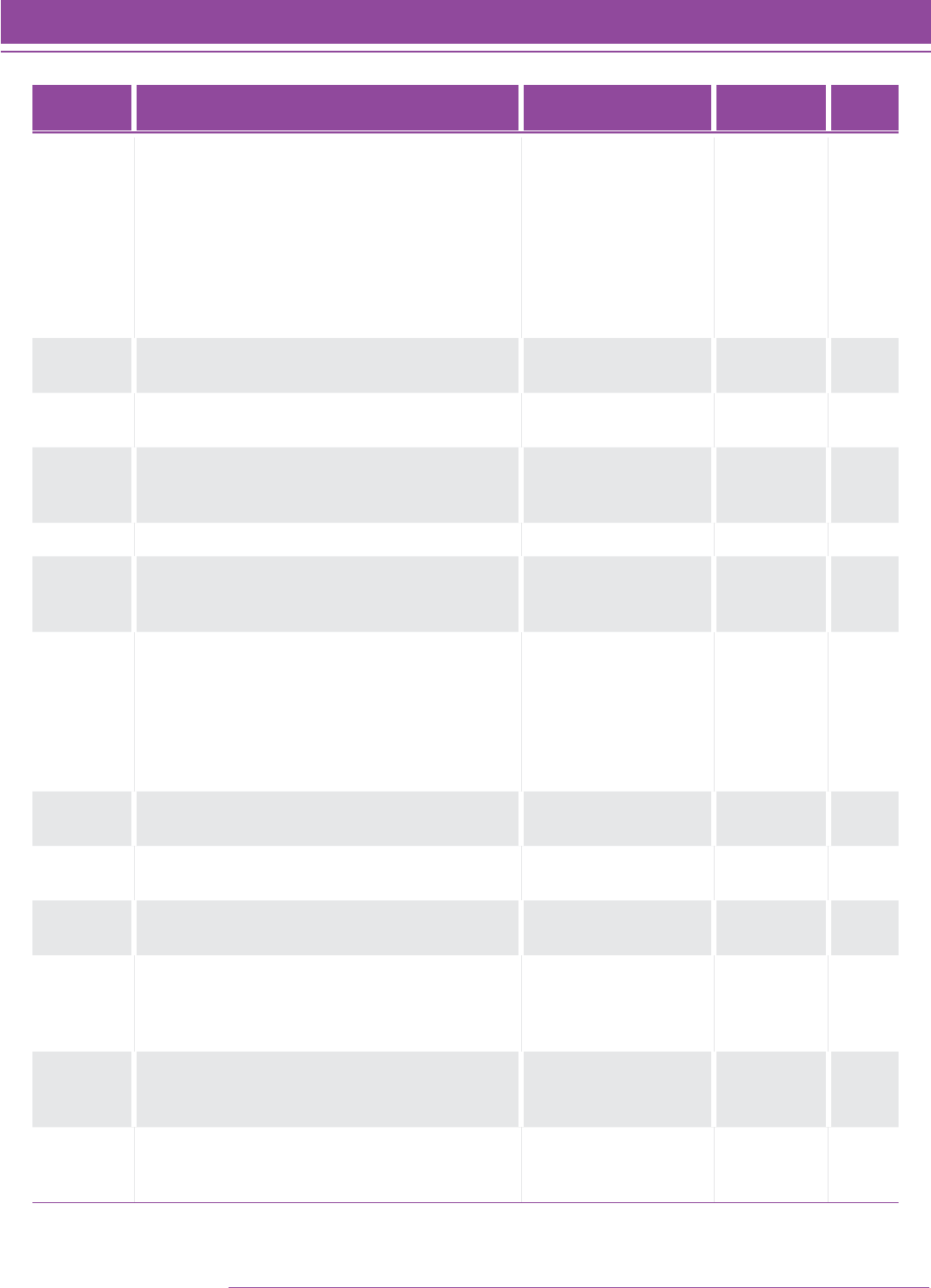

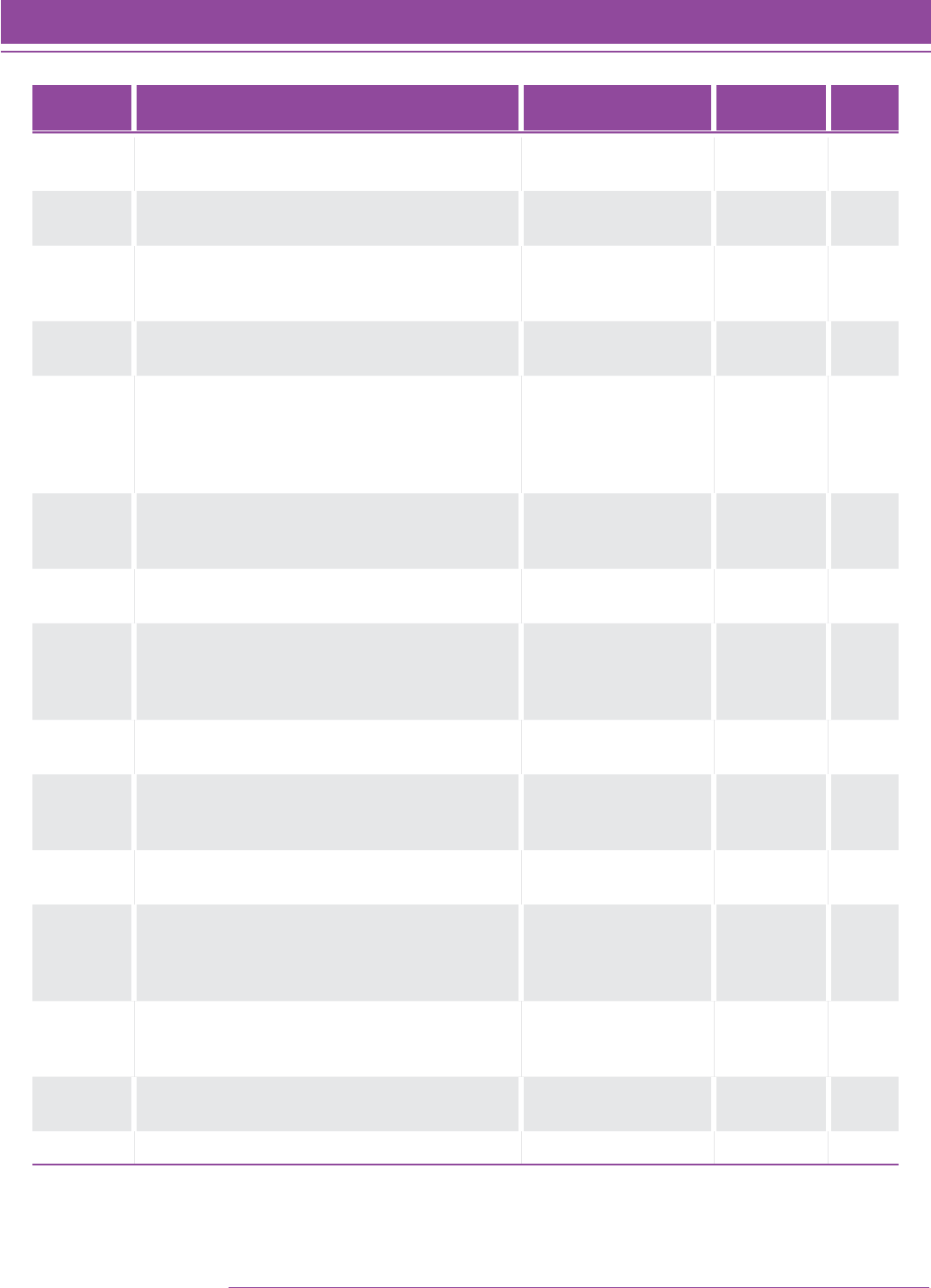

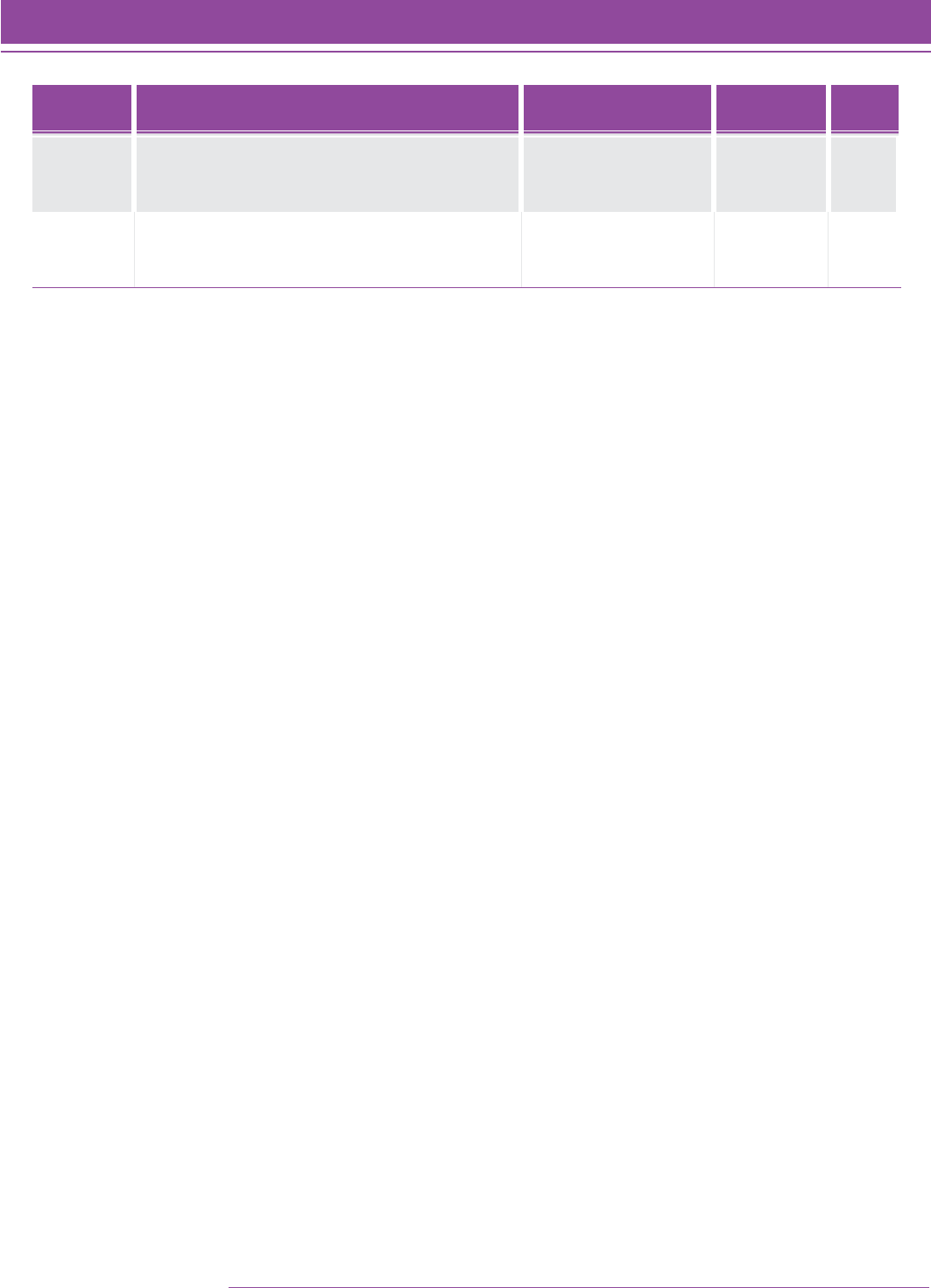

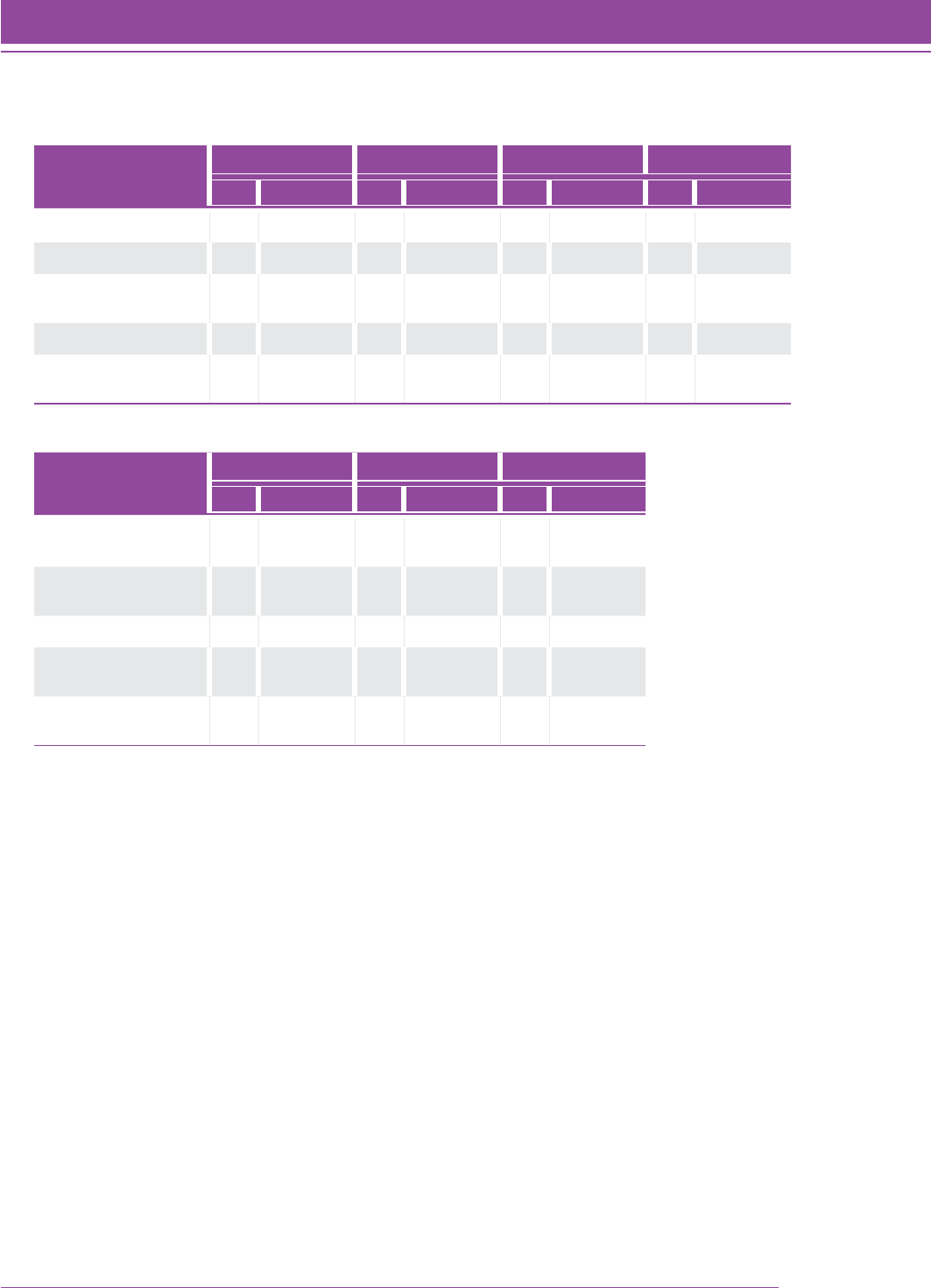

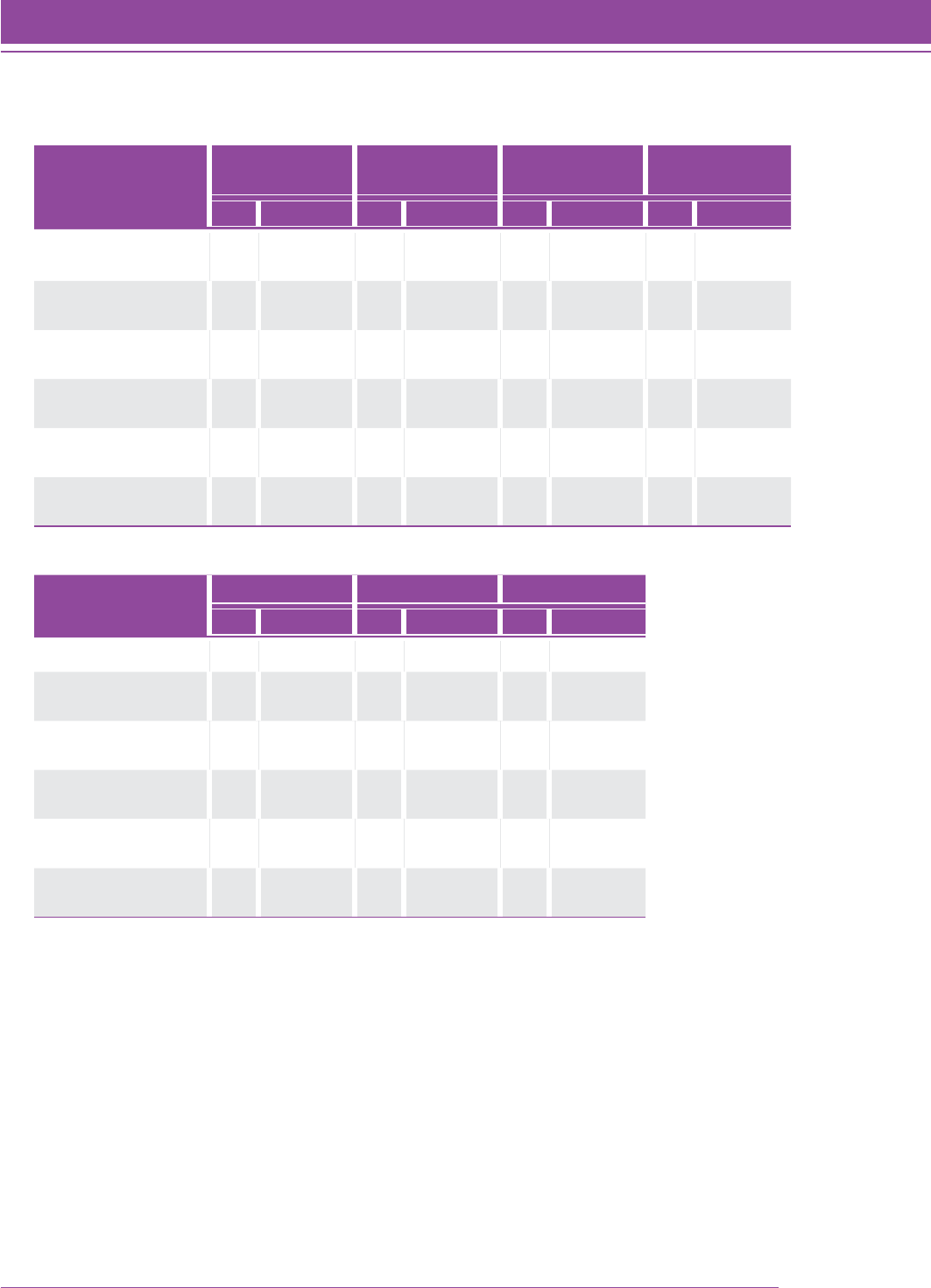

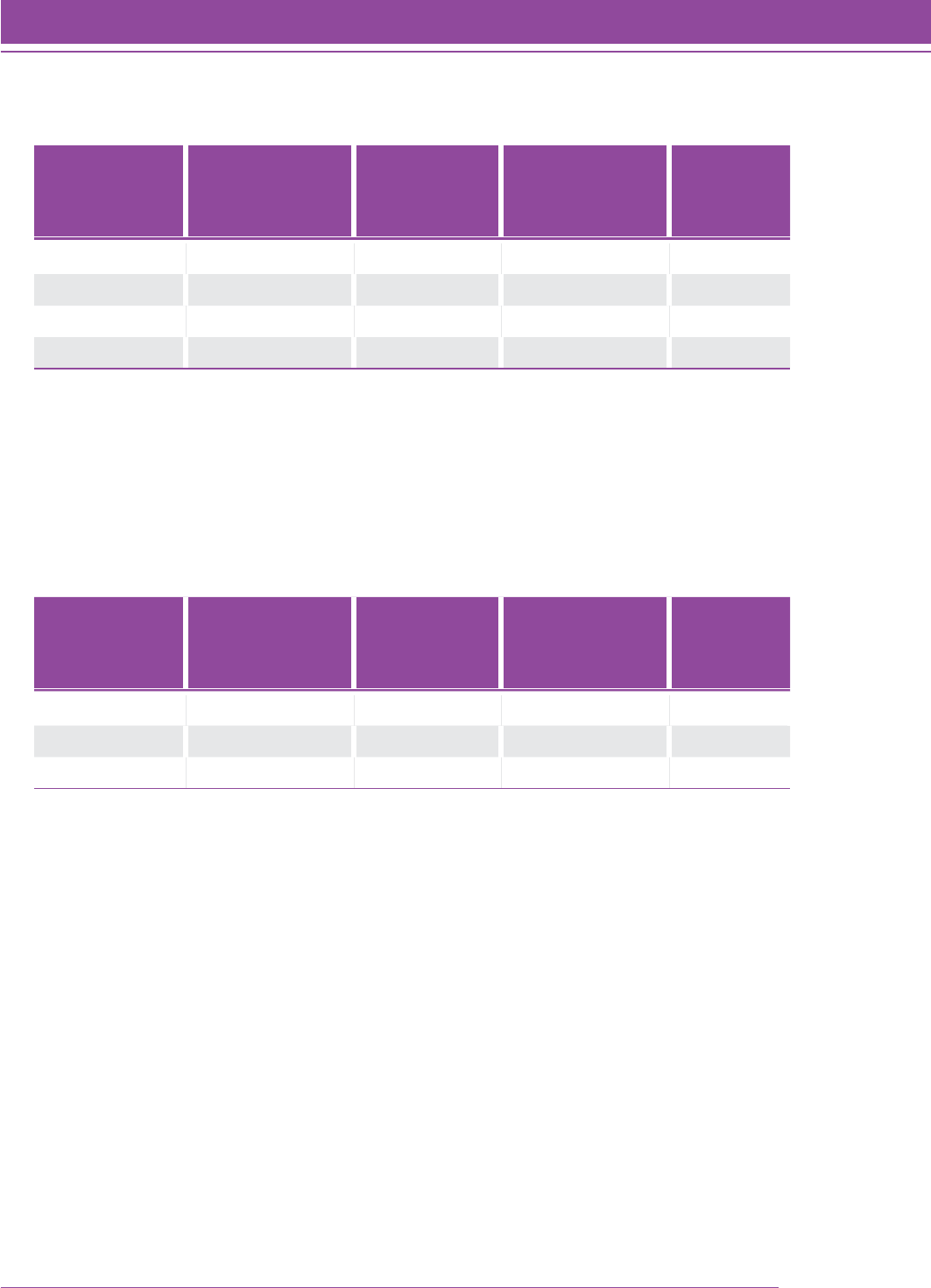

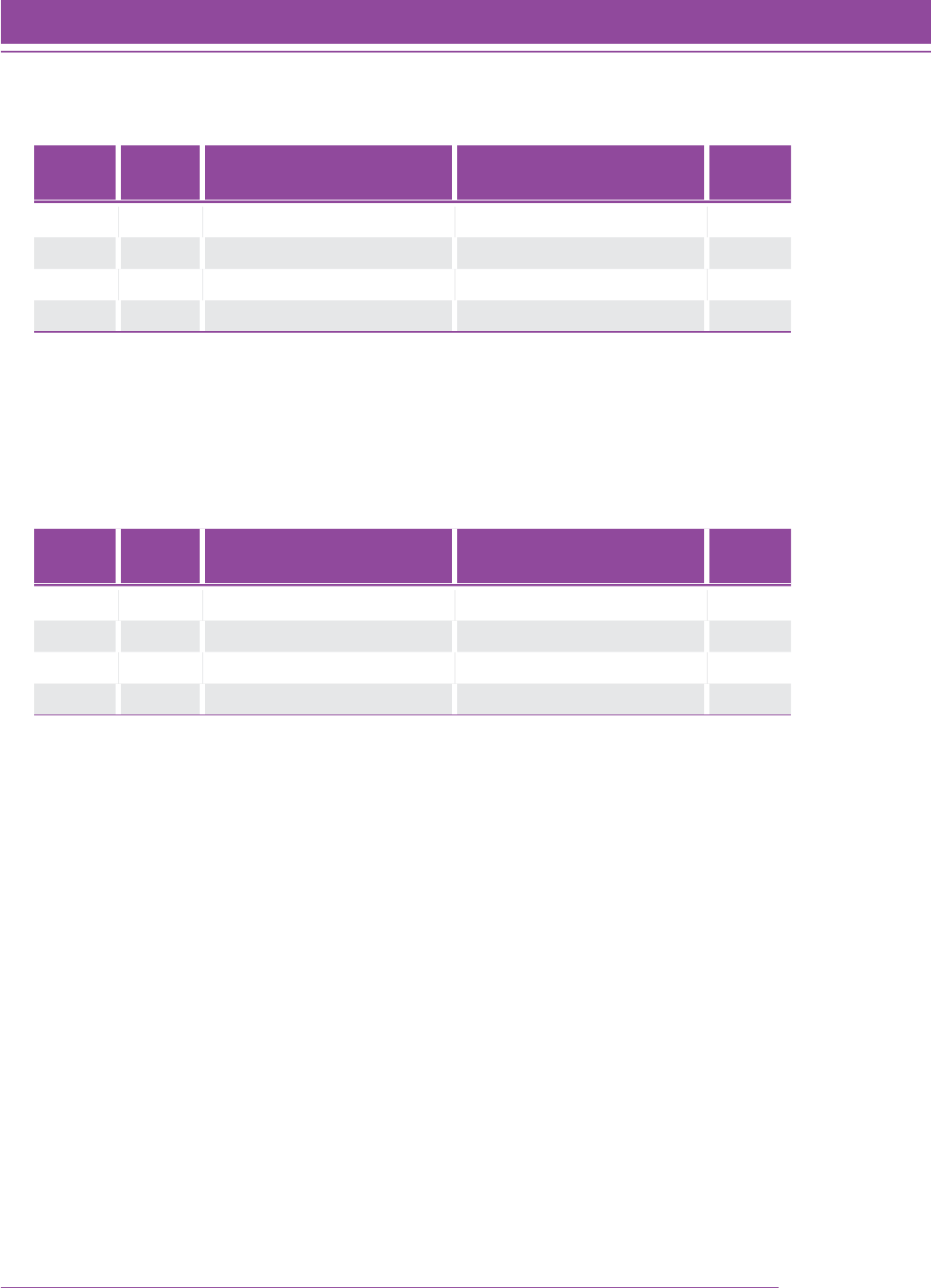

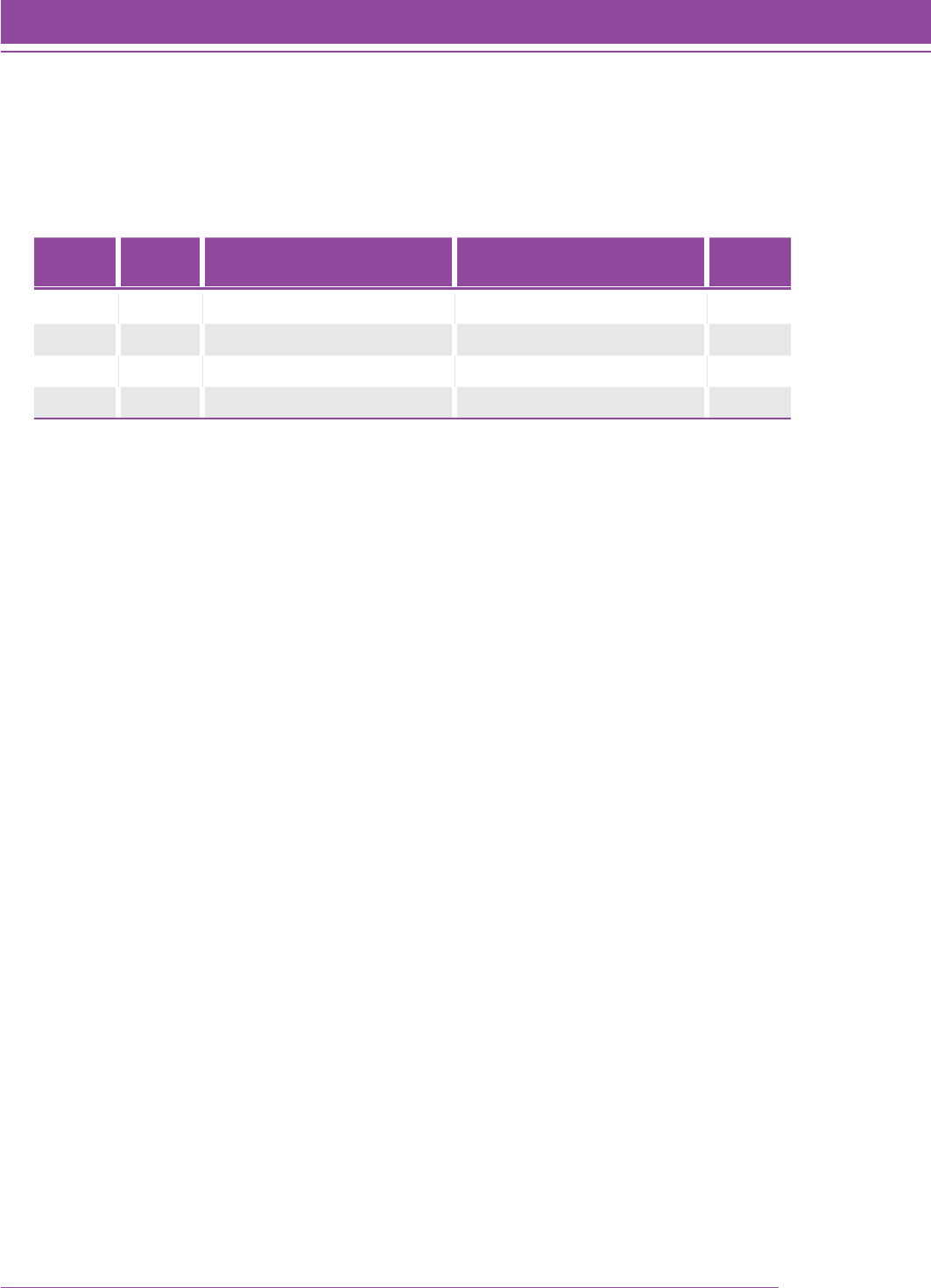

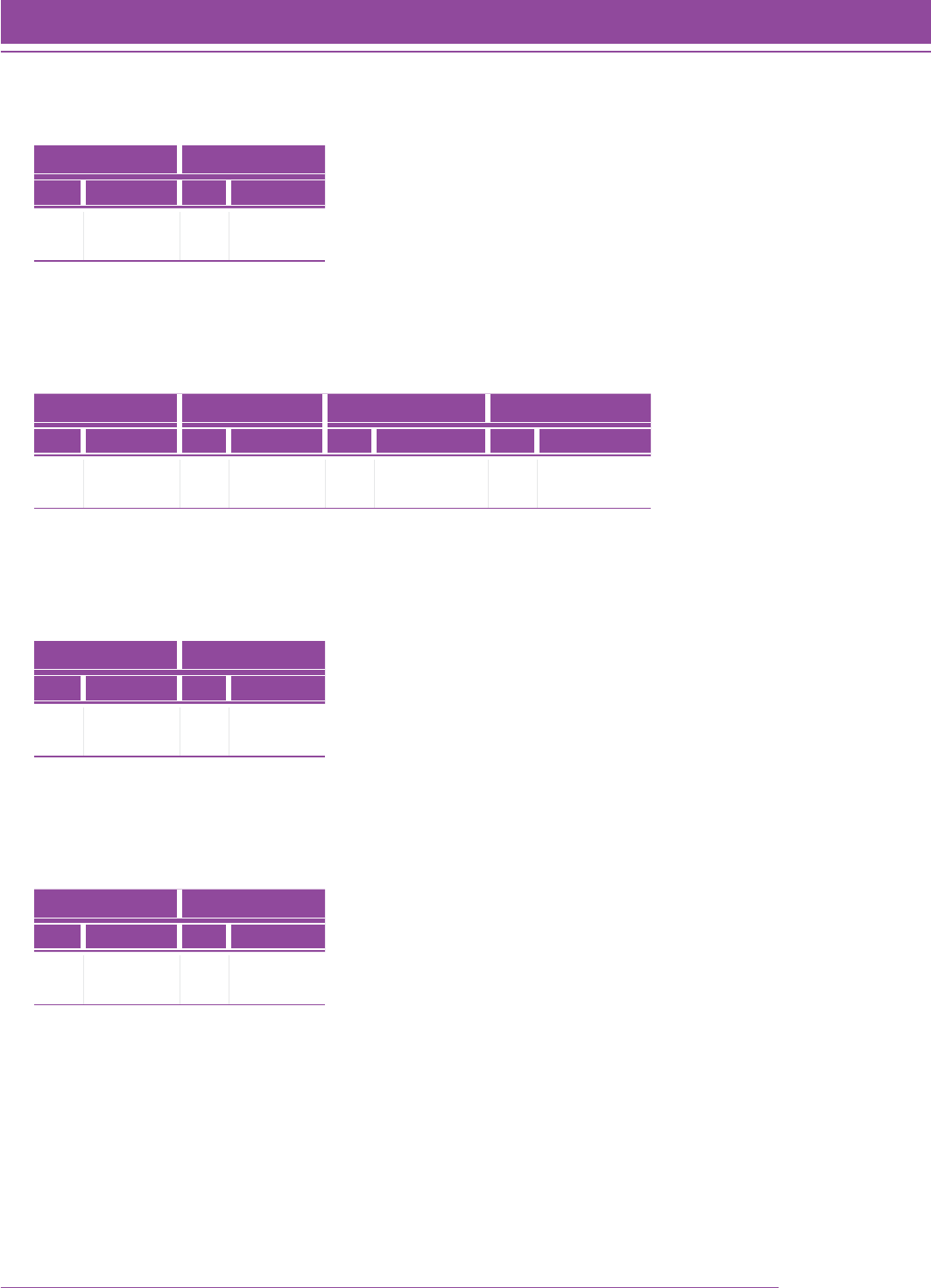

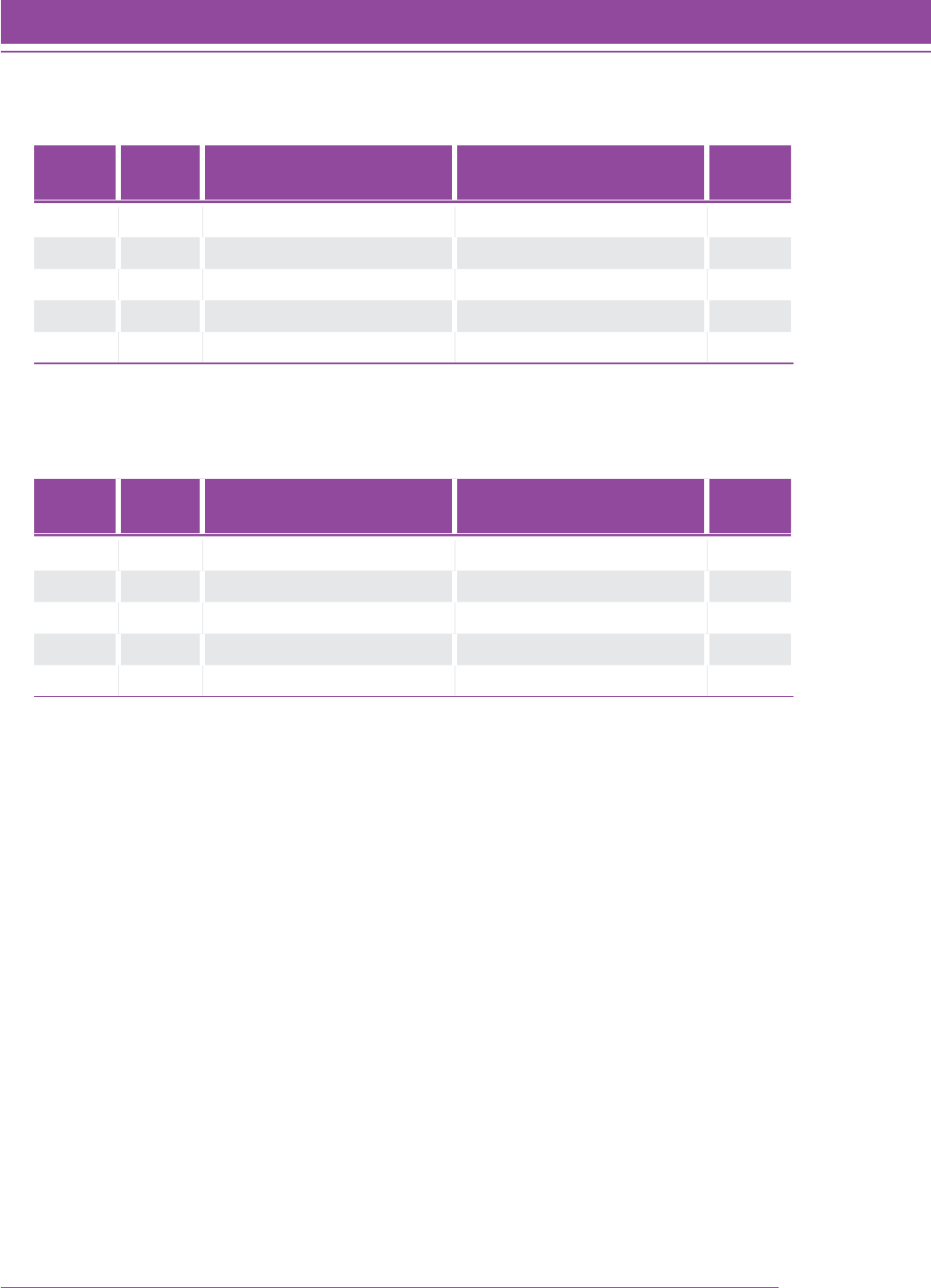

TABLE 1. Field study participation by grade and gender.

Grade Sample Size (N) Percent Female (N) Percent Male (N)

2 1,283 48.1 (562) 51.9 (606)

3 1,354 51.9 (667) 48.1 (617)

4 1,454 47.7 (622) 52.3 (705)

5 1,344 48.9 (622) 51.1 (650)

6 976 47.7 (423) 52.3 (463)

7 1,250 49.8 (618) 50.2 (622)

8 1,015 51.9 (518) 48.1 (481)

9 489 52.0 (252) 48.0 (233)

10 259 48.6 (125) 51.4 (132)

11 206 49.3 (101) 50.7 (104)

12 143 51.7 (74) 48.3 (69)

Missing 74 39.1 (9) 60.9 (14)

Total 9,847 49.6 (4,615) 50.4 (4,696)

TABLE 2. Test form administration by level.

Test Level NMissing Form A Form B Form C

2 1,283 4 453 430 397

3 1,354 7 561 387 399

4 1,454 17 616 419 402

5 1,344 3 470 448 423

6 917 13 322 293 289

7 1,309 6 462 429 411

8 1,181 16 387 391 387

9 415 4 141 136 134

10 226 5 73 77 71

11 313 10 102 101 100

Missing 51 31 9 8 3

Total 9,847 116 3,596 3,119 3,016

24 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation

At the conclusion of the field test, complete data was available from 9,678 students. Data were deleted if the test

level or the test form was not indicated on the answer sheet, or if the answer sheet was blank. These field-test data

were analyzed using both the classical measurement model and the Rasch (one-parameter logistic item response

theory) model. Item statistics and descriptive information (item number, field-test form and item number, QSC, and

answer key) were printed for each item and attached to the item record. The item record contained the statistical,

descriptive, and historical information for an item, a copy of the item as it appeared on the test forms, any comments

by reviewers, and the psychometric notations. Each item had a separate item record.

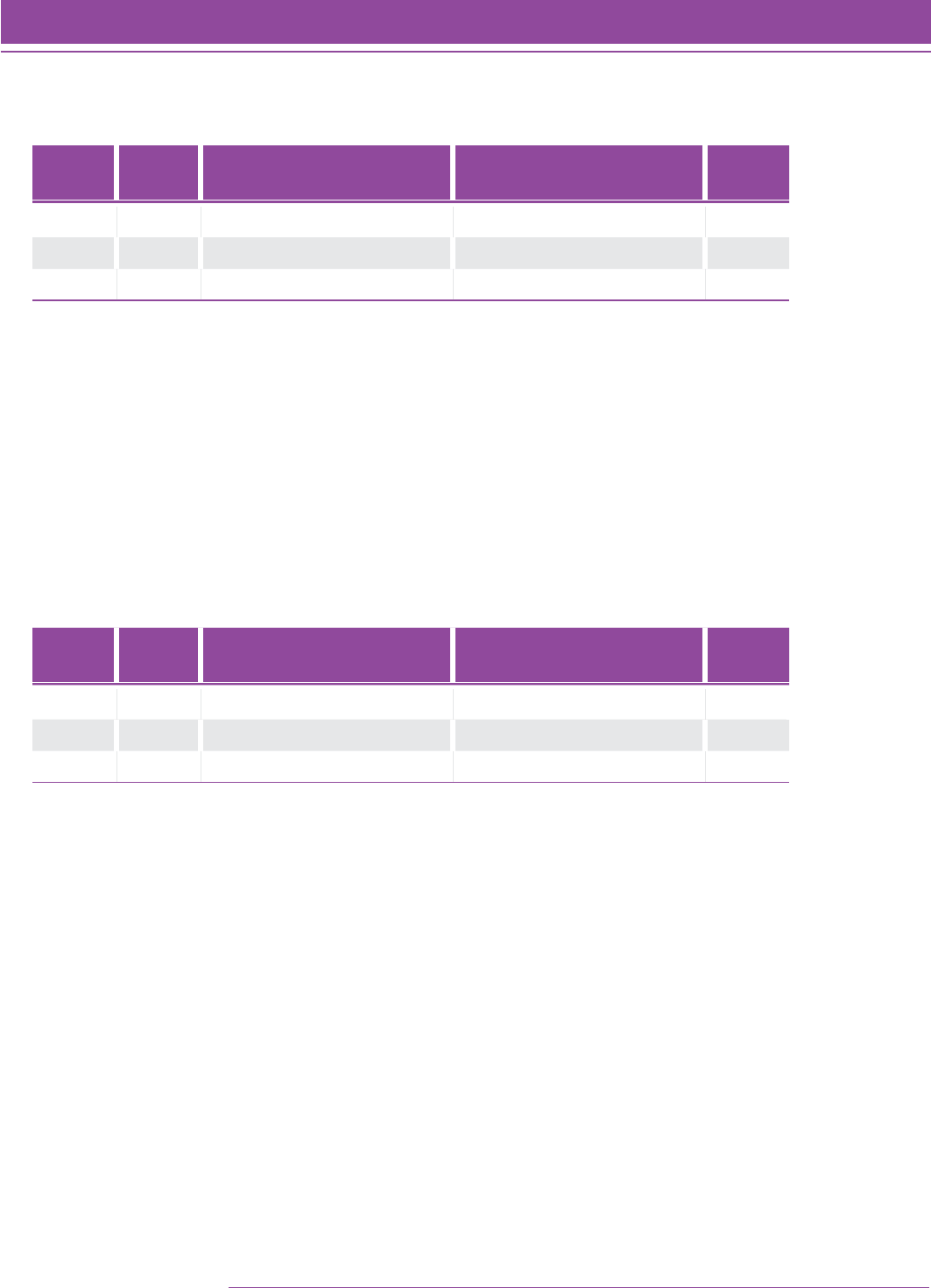

Field-Test Analyses—Classical Measurement

For each item, the p-value (percent correct) and the point-biserial correlation between the item score (correct

response) and the total test score were computed. Point-biserial correlations were also computed between each of

the incorrect responses and the total score. In addition, frequency distributions of the response choices (including

omits) were tabulated (both actual counts and percents).

Point-biserial correlations provide an estimate of the relationship of ability as measured by a specific item and ability

as measured by the overall test. All items were retained for further analyses during the development of the Quantile

scale using the Rasch item response theory model. Items with point-biserial correlations less than 0.10 were

removed from the item bank for future linking studies. Table 3 displays the summary items statistics.

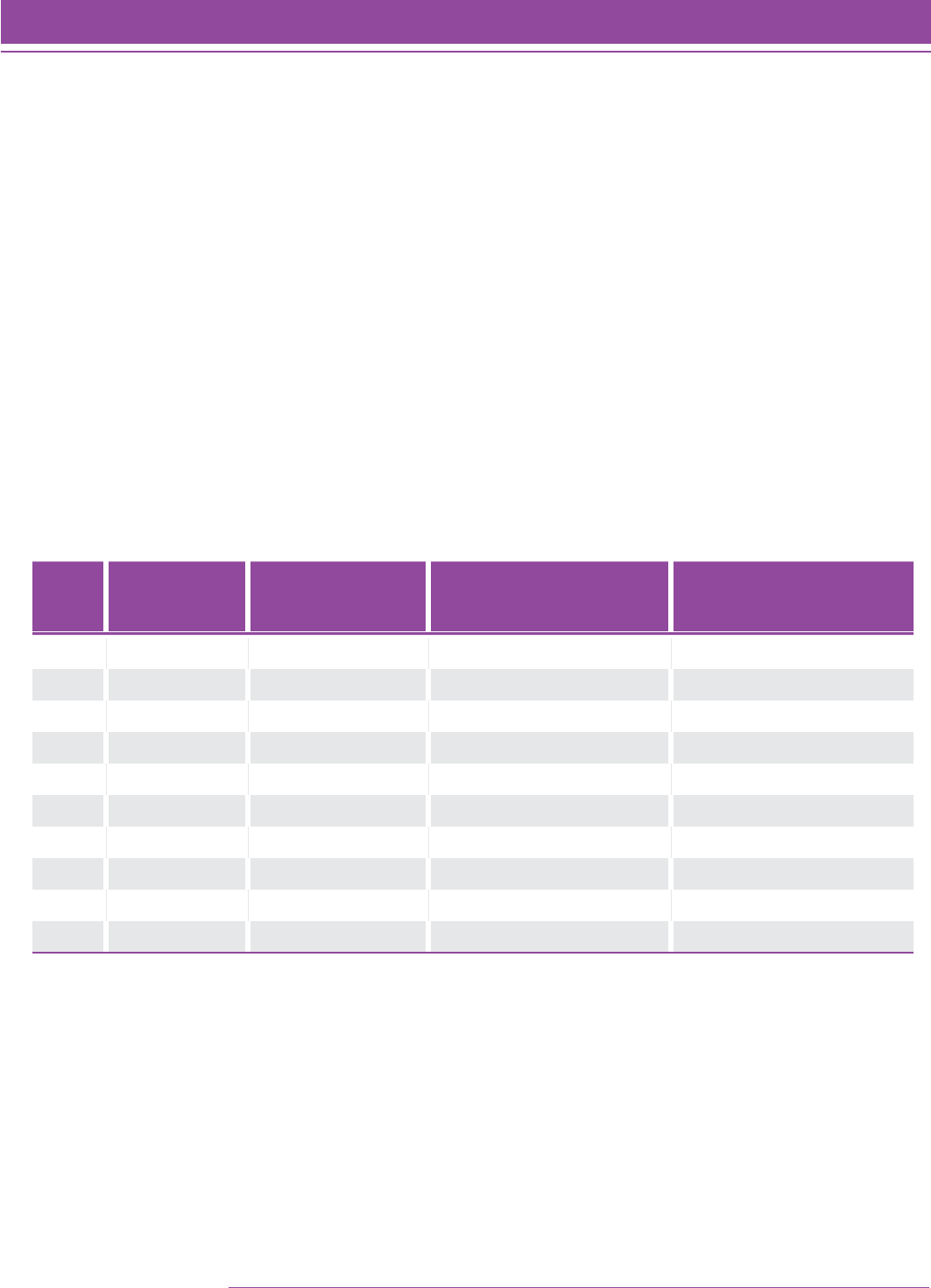

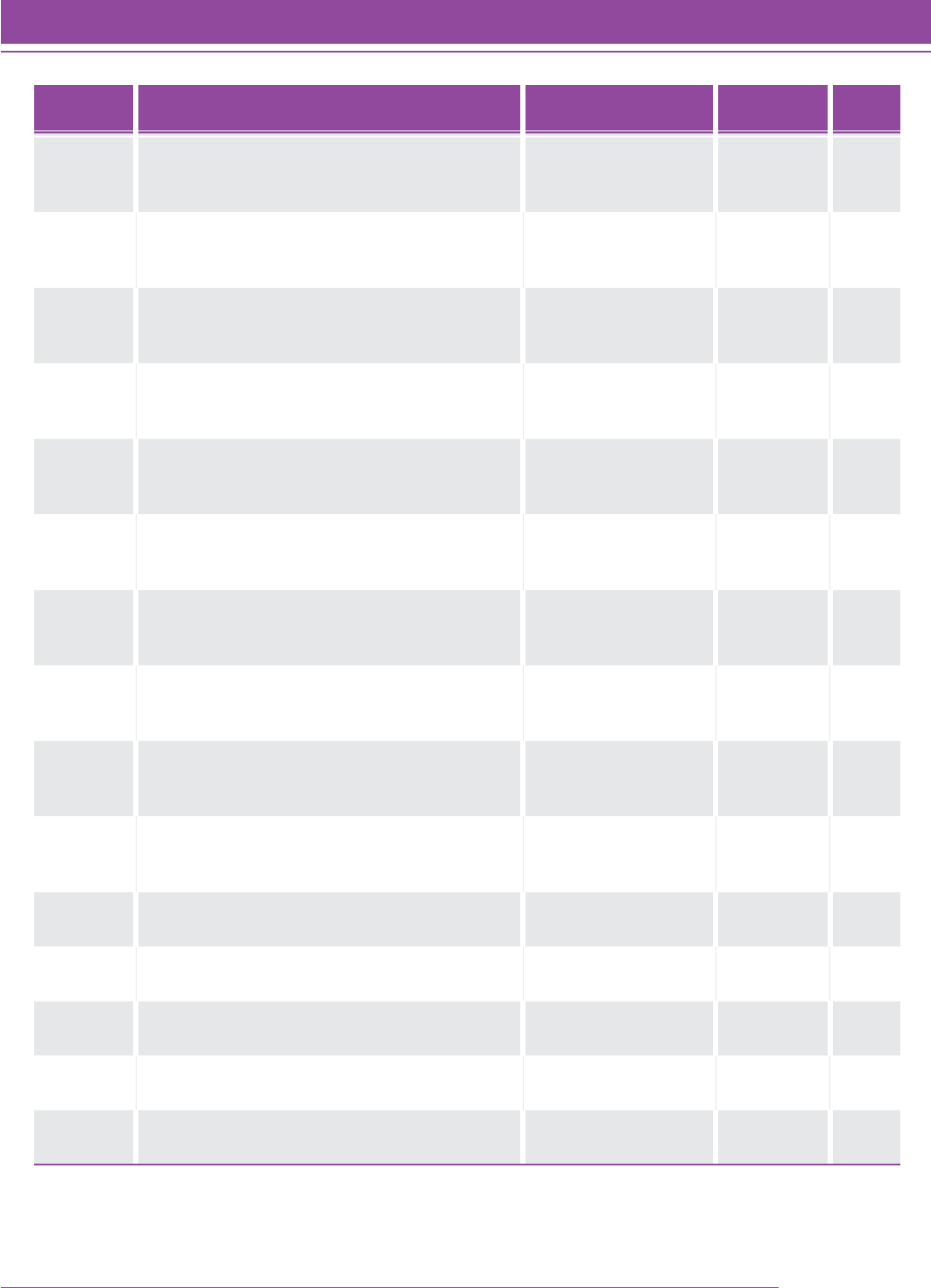

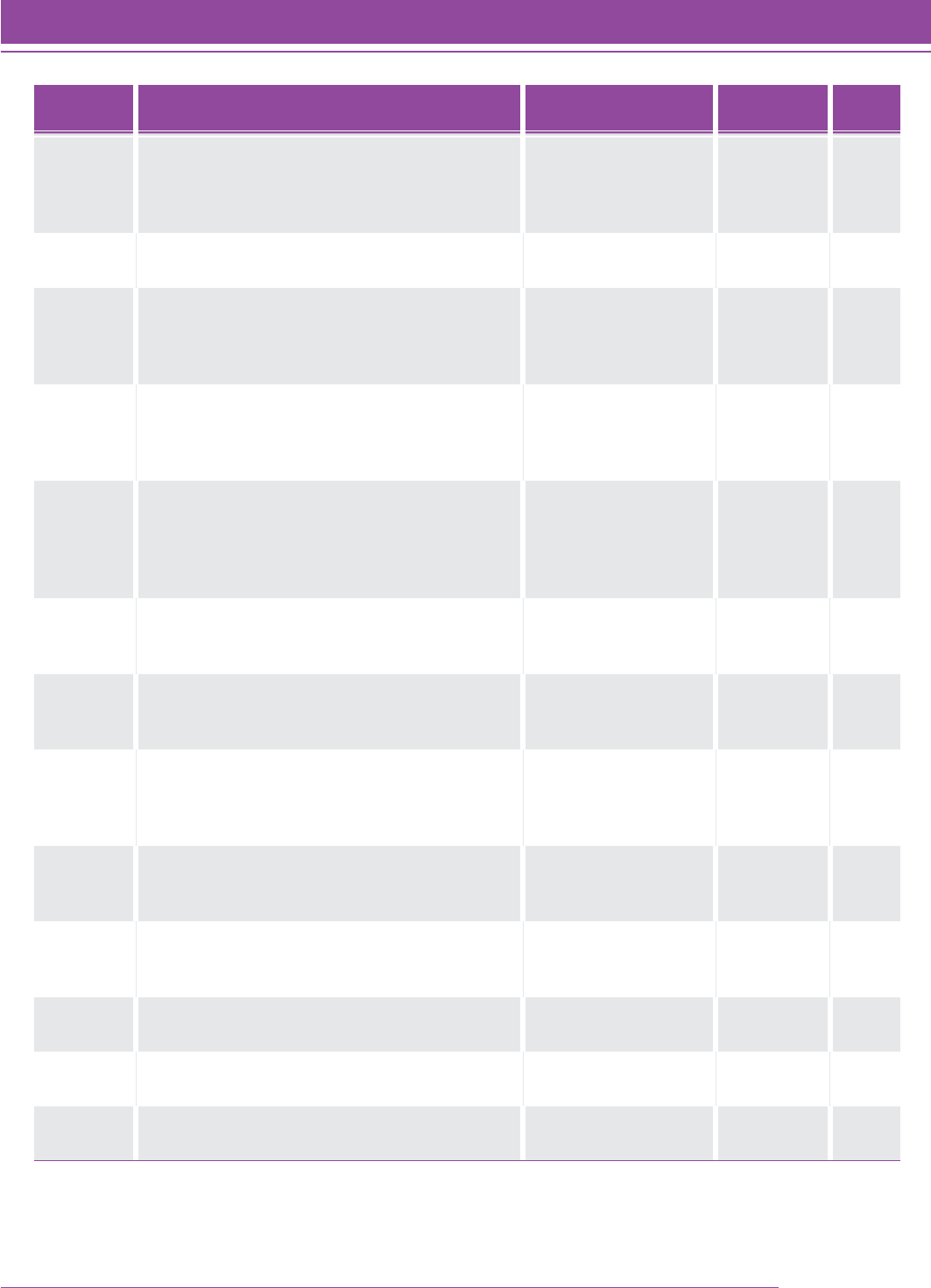

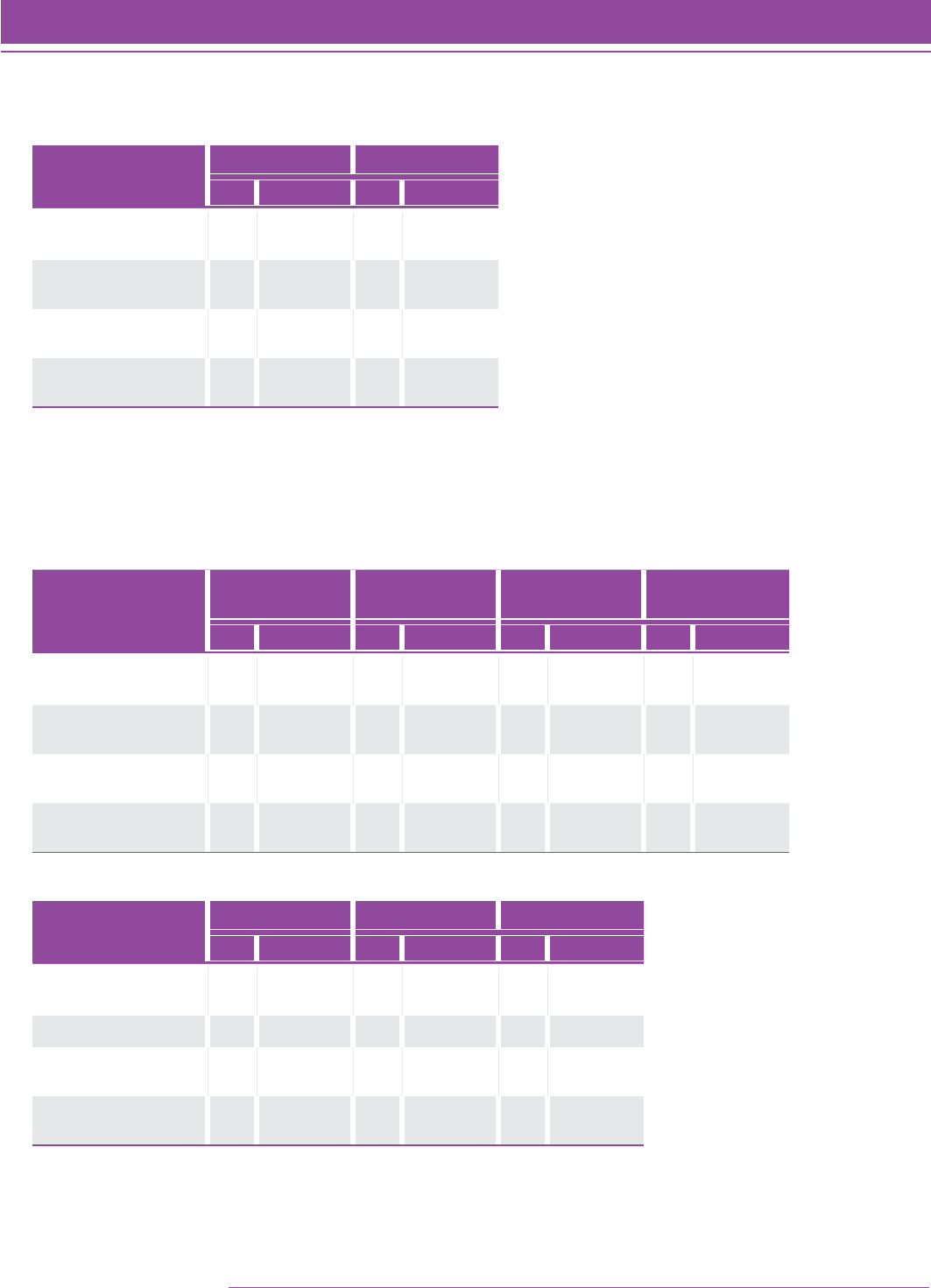

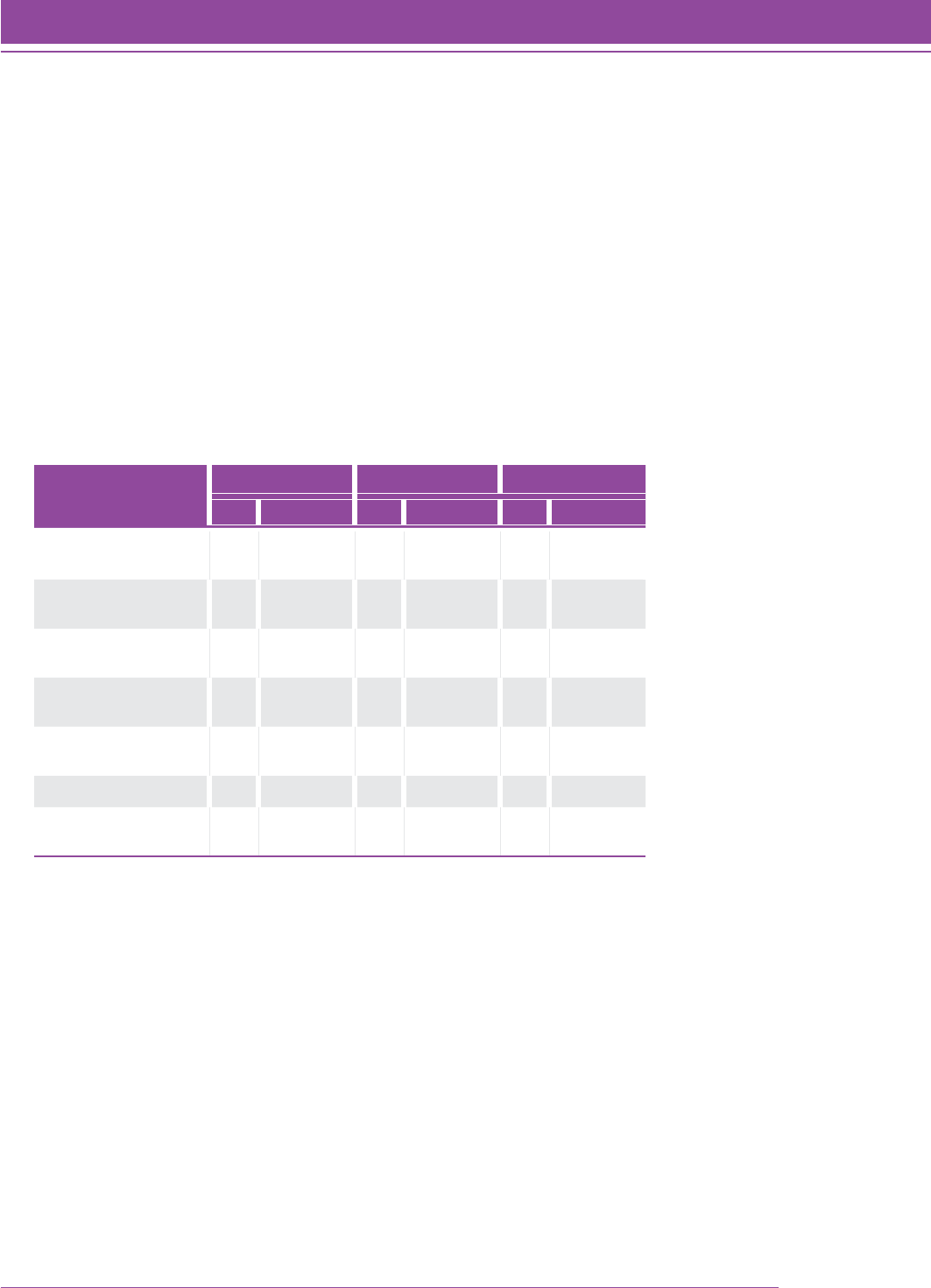

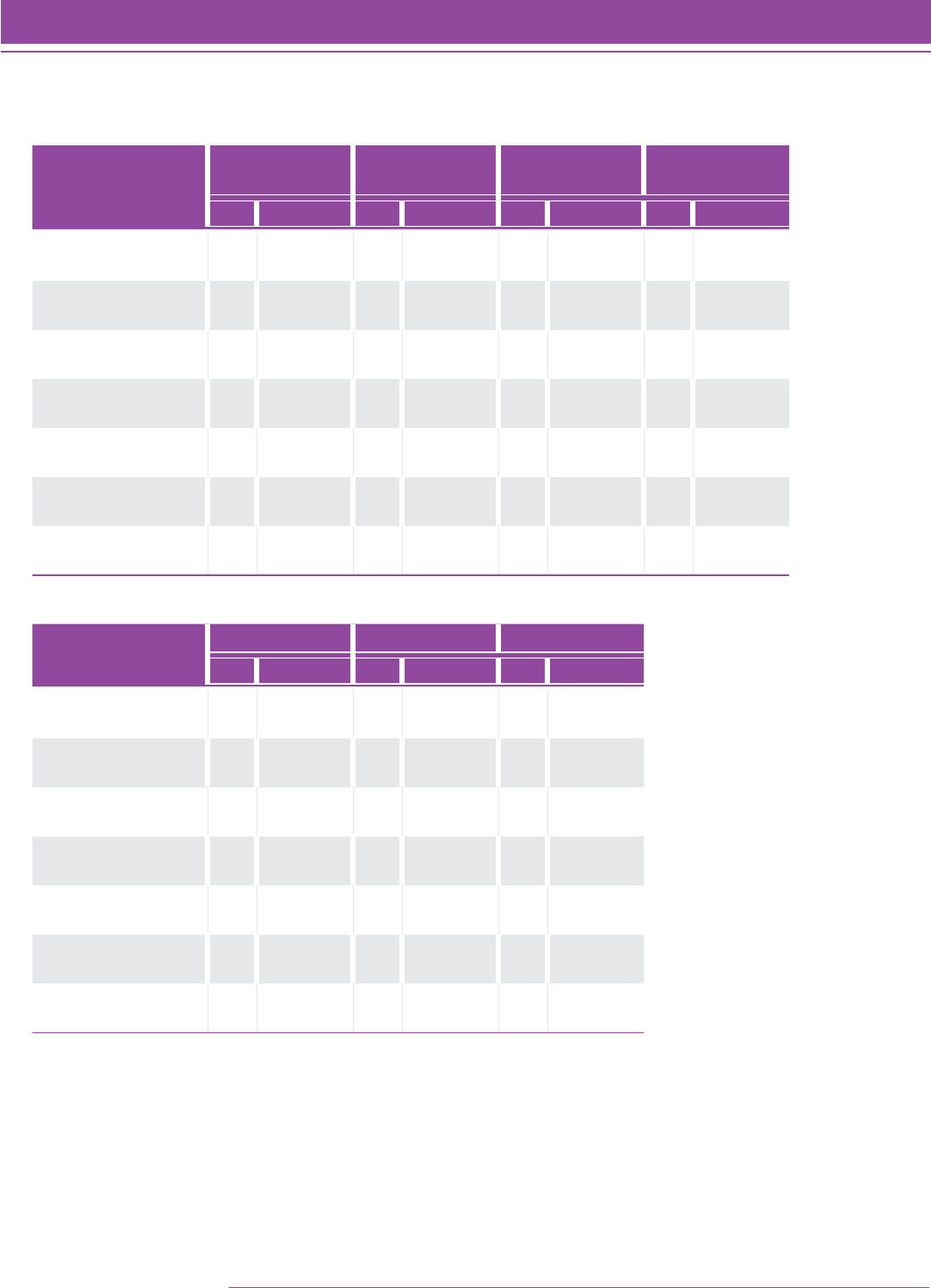

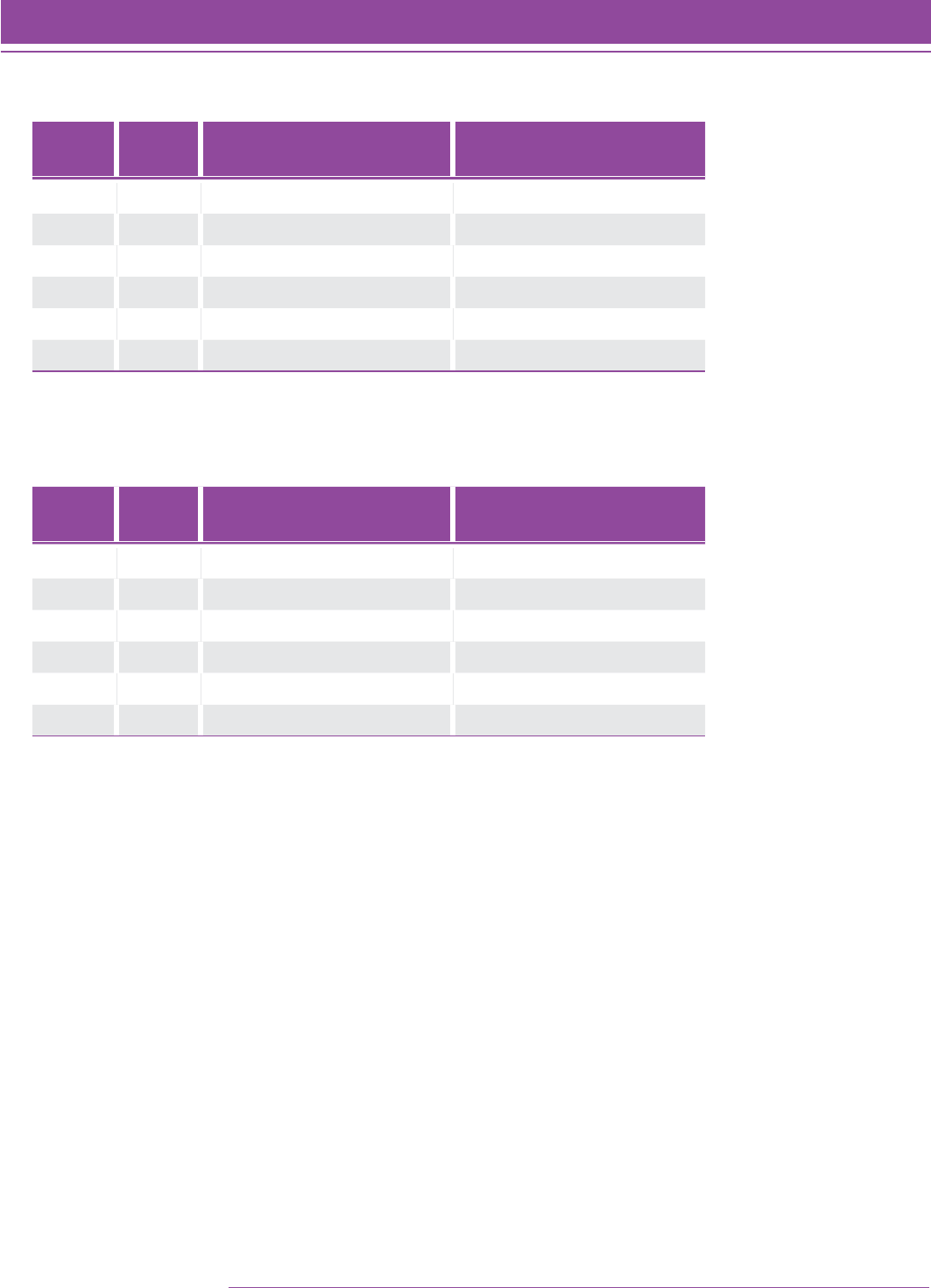

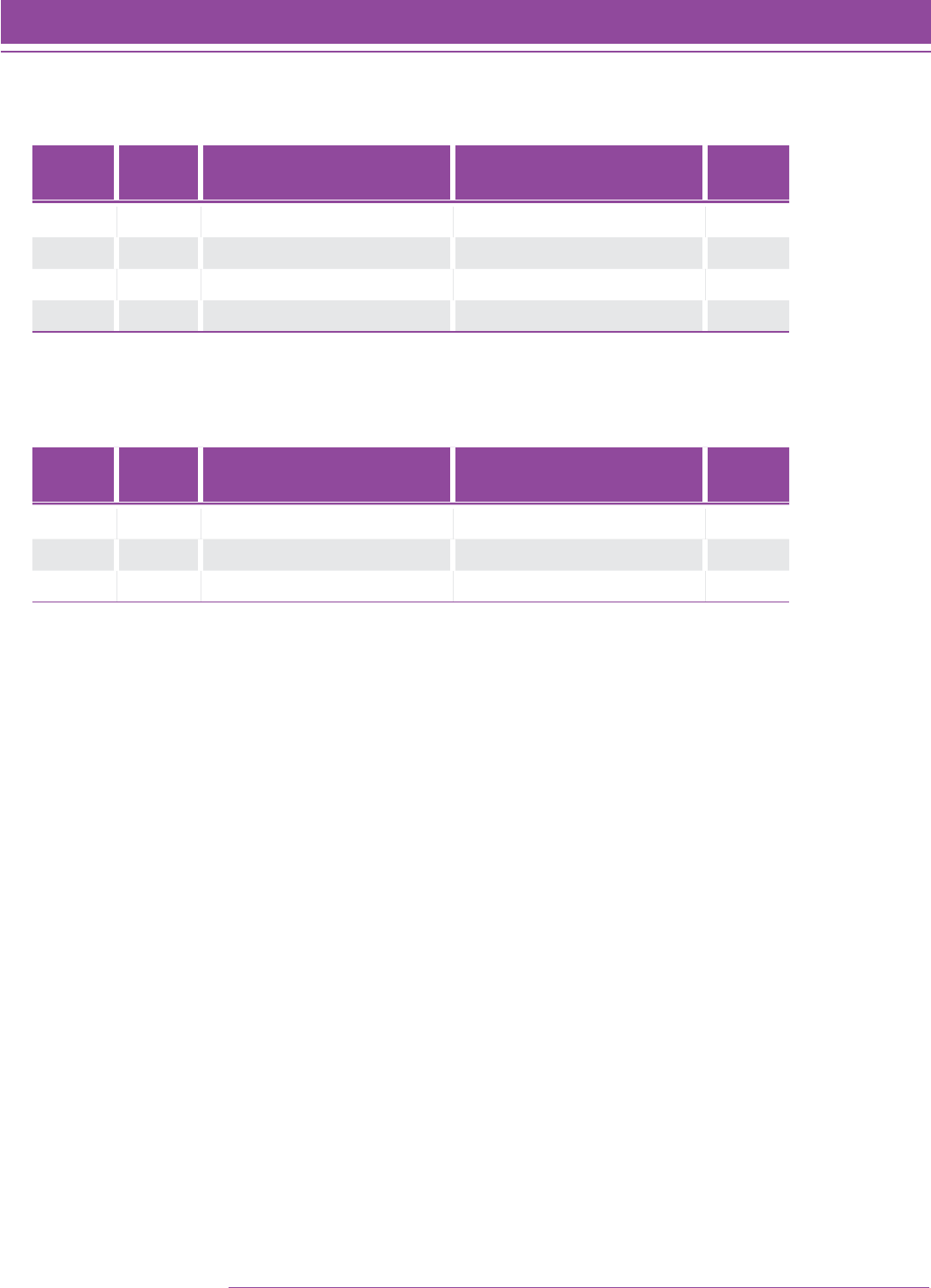

TABLE 3. Summary item statistics from the Quantile Framework field study.

Level Number of

Items Tested

Mean p-value

(Range)

Mean Correct Response

Point-Biserial Correlation

(Range)

Mean Incorrect Responses

Point-Biserial Correlation

(Range)

2 90 0.583 (0.12–0.95) 0.322 (–0.15–0.56) –0.209 (–0.43–0.12)

3 90 0.532 (0.11–0.93) 0.256 (–0.08–0.52) –0.221 (–0.54–0.02)

4 90 0.552 (0.12–0.92) 0.242 (–0.21–0.50) –0.222 (–0.48–0.12)

5 90 0.535 (0.12–0.95) 0.279 (–0.05–0.50) –0.225 (–0.45–0.05)

6 90 0.515 (0.04–0.86) 0.244 (–0.08–0.45) –0.218 (–0.46–0.09)

7 90 0.438 (0.10–0.77) 0.294 (–0.12–0.56) –0.207 (–0.46–0.25)

8 90 0.433 (0.10–0.81) 0.257 (–0.15–0.50) –0.201 (–0.45–0.13)

9 90 0.396 (0.10–0.79) 0.208 (–0.19–0.52) –0.193 (–0.53–0.22)

10 90 0.511 (0.01–0.97) 0.193 (–0.26–0.53) –0.205 (–0.55–0.18)

11 90 0.527 (0.09–0.98) 0.255 (–0.09–0.51) –0.223 (–0.52–0.07)

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation 25

Theoretical Foundation

Field-Test Analyses—Bias

Differential item functioning (DIF) examines the relationship between the score on an item and group membership,

while controlling for achievement. The Mantel-Haenszel procedure is a widely used methodology to examine

differential item functioning. (Roussos, Schnipke, & Pashley, 1999, p. 293). The Mantel-Haenszel procedure examines

DIF by examining j 2 3 2 contingency tables, where j is the number of different levels of achievement actually

accomplished by the examinees (actual total scores received on the test). The focal group is the group of interest

and the reference group serves as a basis for comparison for the focal group (Camilli & Shepard, 1994; Dorans &

Holland, 1993).

The Mantel-Haenszel chi-square statistic tests the alternative hypothesis that there is a linear association between

the row variable (score on the item) and the column variable (group membership). The Mantel-Haenszel x2

distribution has one degree of freedom and is determined as:

QMH 5 (n 2 1)r

2 (Equation 1)

where r

2 is the Pearson correlation between the row variable and the column variable (SAS Institute Inc., 1985).

The Mantel-Haenszel Log Odds Ratio statistic is used to determine the direction of DIF and can be calculated

using SAS. This measure is obtained by combining the odds ratios,

a

j

, across levels with the formula for weighted

averages (Camilli & Shepard, 1994).

For the gender analyses, males (approximately 50.4% of the population) were defined as the reference group and

females (approximately 49.6% of the population) were defined as the focal group.

The results from the Quantile Framework field study were reviewed for inclusion on future linking studies. The

following statistics were reviewed for each item: p-value, point-biserial correlation, and DIF estimates. Items that

exhibited extreme statistics were considered biased and removed from the item bank (47 out of 685).

From the studies conducted with the Quantile Framework item bank (Palm Beach County [FL] linking study,

Mississippi linking study, Department of Defense/TerraNova linking study, and Wyoming linking study), approximately

6.9% of the items in any one study were flagged as exhibiting DIF using the Mantel-Haenszel statistic and the

t-statistic from Winsteps. For each linking study the following steps were used to review the items: (1) flag the items

exhibiting DIF, (2) review the flagged items to determine if the content of the item is something that all students are

expected to know, and (3) make a decision to retain or delete the item.

26 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation

Field-Test Analyses—Rasch Item Response Theory

Classical test theory has two basic shortcomings: (1) the use of item indices whose values depend on the particular

group of examinees from which they were obtained, and (2) the use of examinee achievement estimates that depend

on the particular choice of items selected for a test. The basic premises of item response theory (IRT) overcome

these shortcomings by predicting the performance of an examinee on a test item based on a set of underlying

abilities (Hambleton & Swaminathan, 1985). The relationship between an examinee’s item performance and the

set of traits underlying item performance can be described by a monotonically increasing function called an item

characteristic curve (ICC). This function specifies that as the level of the trait increases, the probability of a correct

response to an item increases.

The conversion of observations into measures can be accomplished using the Rasch (1980) model, which states

a requirement for the way item difficulties (calibrations) and observations (count of correct items) interact in a

probability model to produce measures. The Rasch item response theory model expresses the probability that a

person (n ) answers a certain item (i ) correctly by the following relationship (Hambleton & Swaminathan, 1985;

Wright & Linacre, 1994):

Pni 5 e

bn

2 di

1 1 e

bn

2 di

(Equation 2)

where di is the difficulty of item i (i 5 1, 2, . . ., number of items),

bn is the achievement of person n (n 5 1, 2, . . ., number of persons),

bn 2 di is the difference between the achievement of person n and the difficulty of item i, and

Pni is the probability that examinee n responds correctly to item i.

The Rasch measurement model assumes that item difficulty is the only item characteristic that influences the

examinee’s performance. In other words, all items are equally discriminating in their ability to identify low-achieving

persons and high achieving persons (Bond & Fox, 2001; Hambleton, Swaminathan, & Rogers, 1991). In addition, the

lower asymptote is zero, which specifies that examinees of very low achievement have zero probability of correctly

answering the item. The Rasch model has the following assumptions:

(1) unidimensionality —only one construct is assessed by the set of items

(2) local independence —when abilities influencing test performance are held constant, an examinee’s

responses to any pair of items are statistically independent (conditional independence, i.e., the only

reason an examinee scores similarly on several items is because of his or her achievement, not because

the items are correlated)

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation 27

Theoretical Foundation

The Rasch model is based on fairly restrictive assumptions, but it is appropriate for criterion-referenced

assessments.

For the Quantile Framework field study, all students and items were submitted to a Winsteps analysis using a logit

convergence criterion of 0.0001 and a residual convergence criterion of 0.001. Items that a student skipped were

treated as missing, rather than being treated as incorrect. Only students who responded to at least 20 items were

included in the analyses (22 students were omitted, 0.22%).

The Quantile measure comes from multiplying the logit value by 180 and is anchored at 656Q. The multiplier and

the anchor point will be discussed in a later section. Table 4 shows the mean and median Quantile measures for all

students with complete data at each grade level. While there is not a monotonically increasing trend in the mean and

median Quantile measures (note that the measure for Grade 6 is higher than the measure for Grade 7), the measures

are not significantly different. Results from other studies (e.g., PASeries Math) did exhibit a monotonically increasing

function.

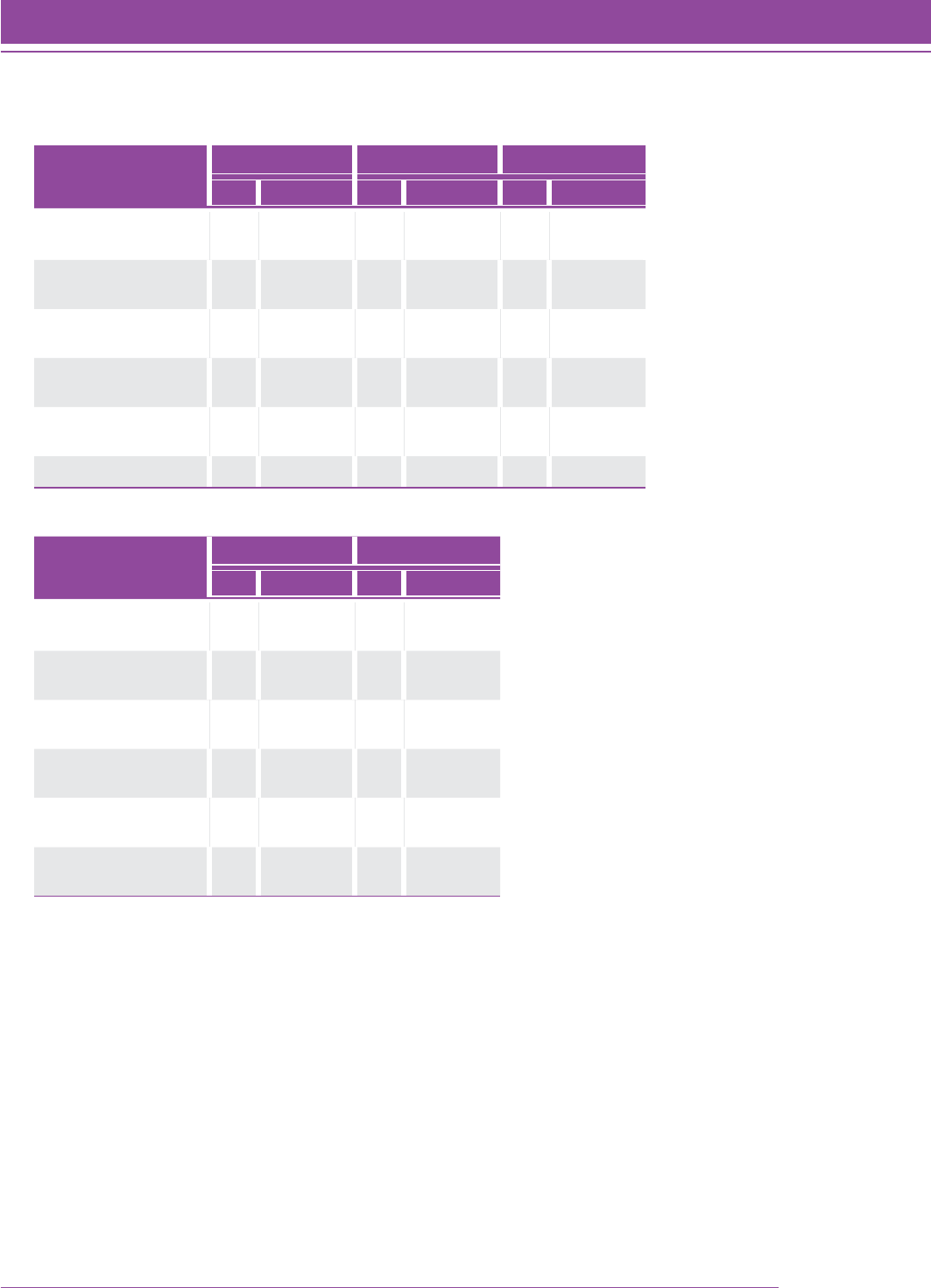

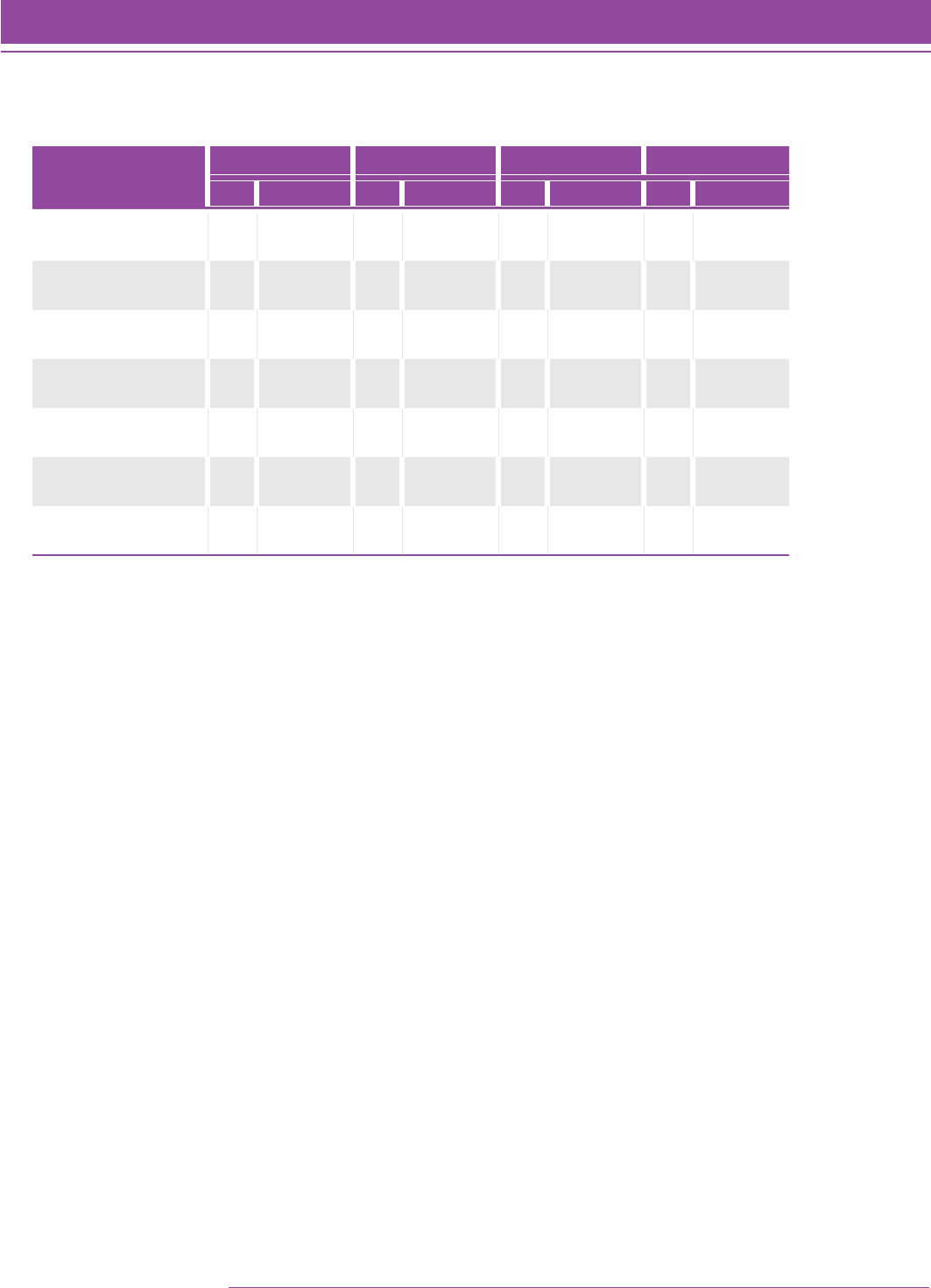

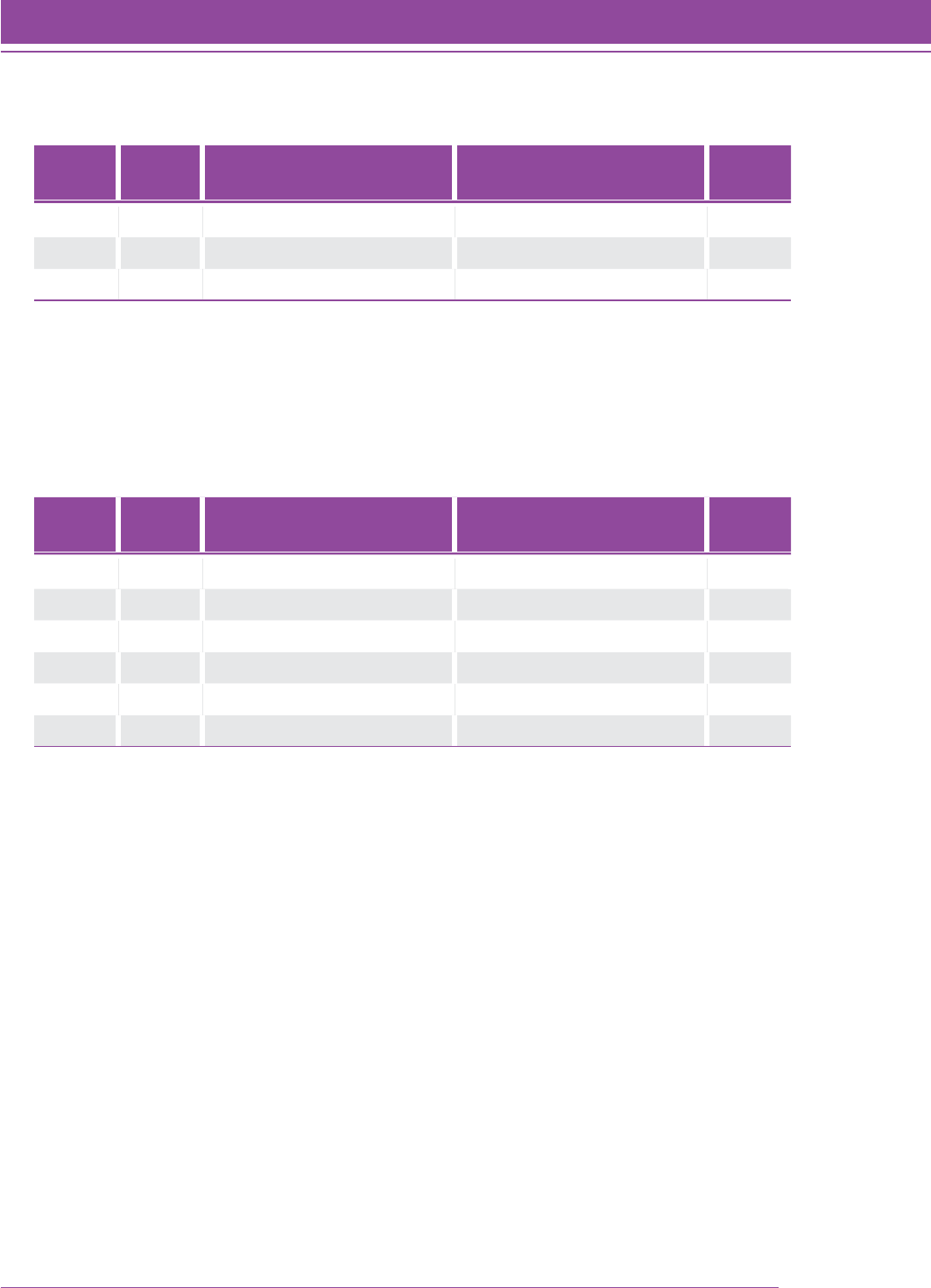

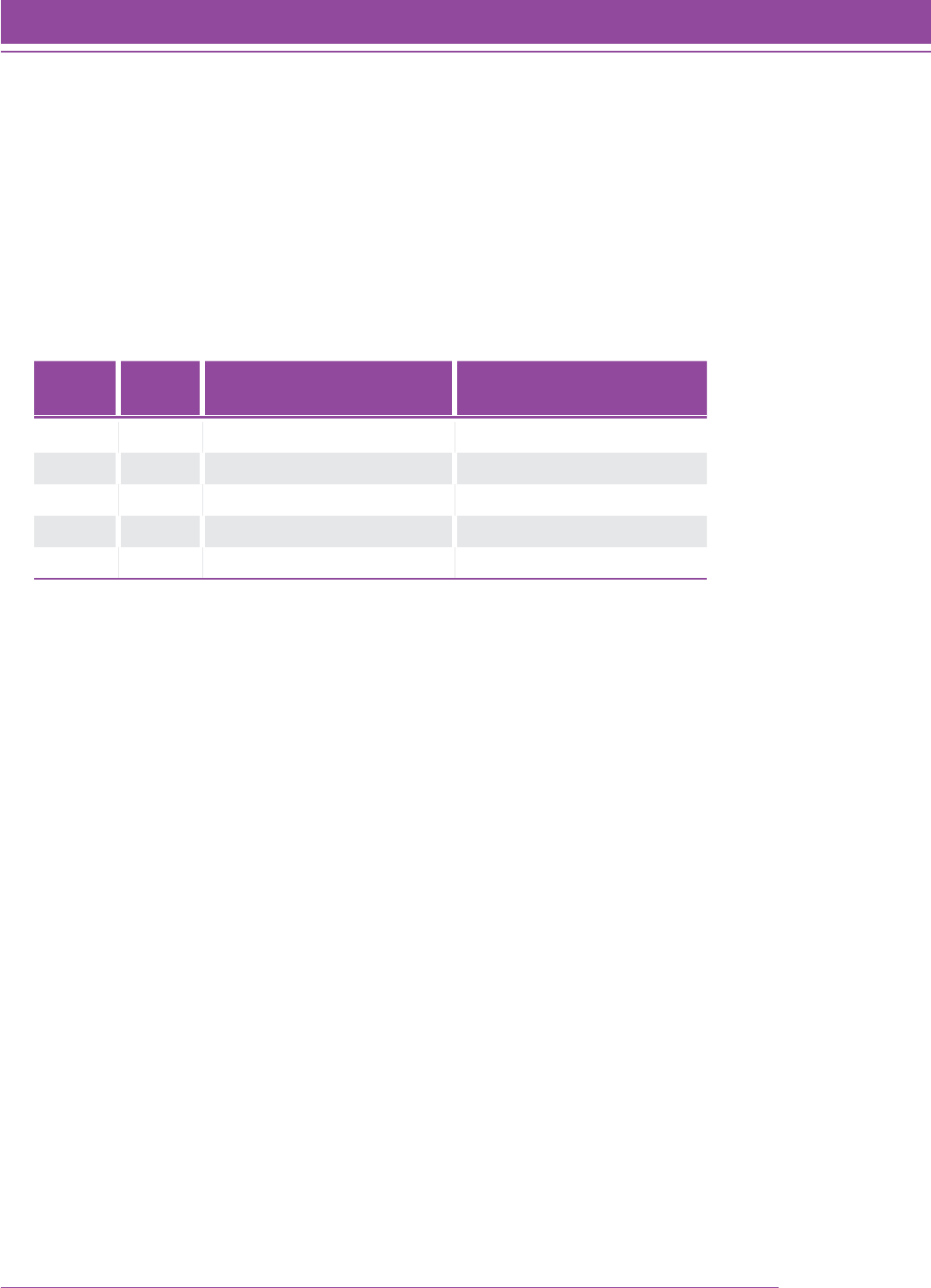

TABLE 4. Mean and median Quantile measure for N 5 9,656 students with complete data.

Grade Level NMean Quantile Measure

(Standard Deviation)

Median Quantile

Measure

2 1,275 320.68 (189.11) 323

3 1,339 511.41 (157.69) 516

4 1,427 655.45 (157.50) 667

5 1,337 790.06 (167.71) 771

6 959 871.82 (153.02) 865

7 1,244 860.52 (174.16) 841

8 1,004 929.01 (157.63) 910

9 482 958.69 (152.81) 953

10 251 1019.97 (162.87) 1005

11 200 1127.34 (178.57) 1131

12 138 1185.90 (189.19) 1164

28 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation

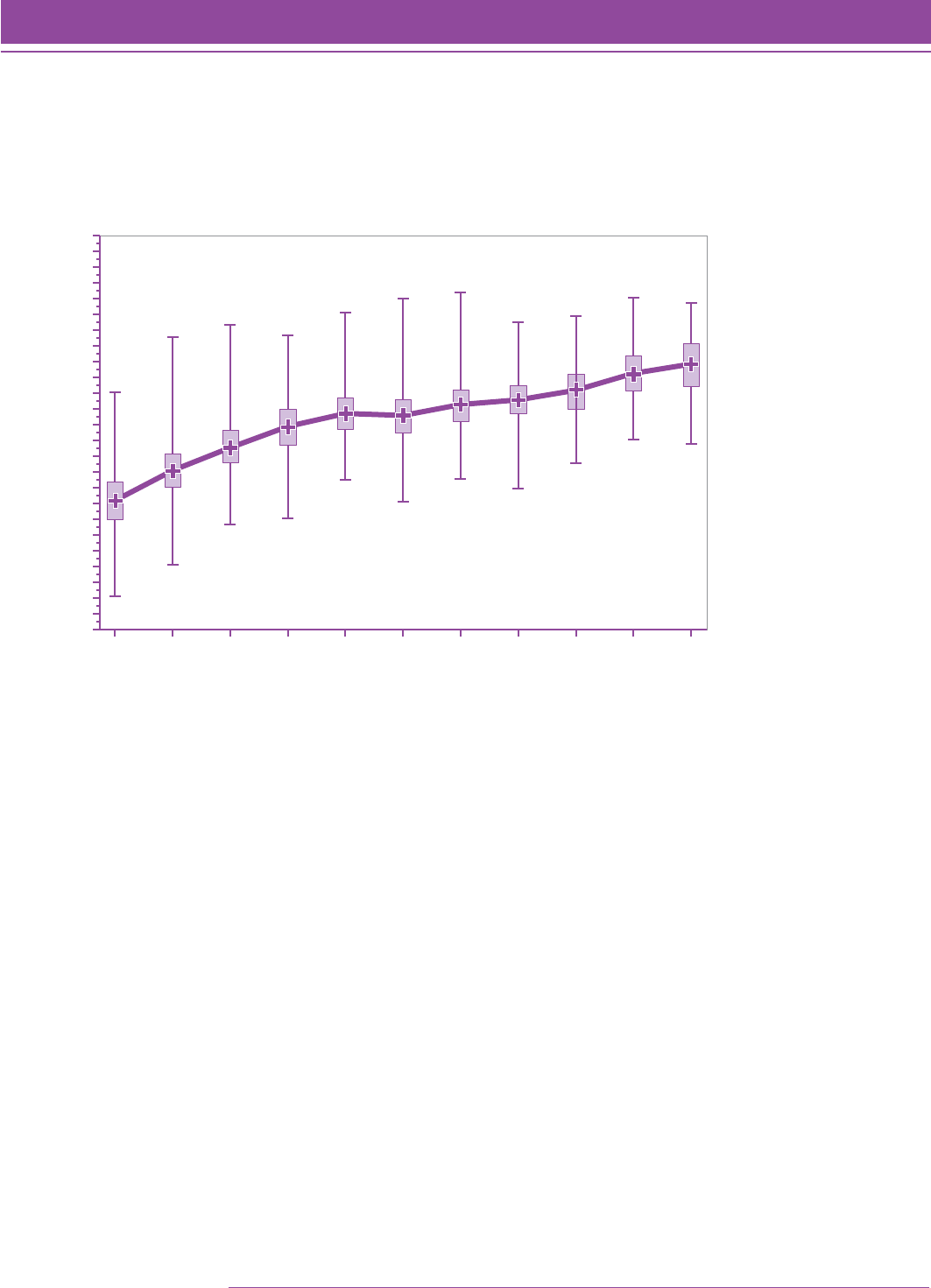

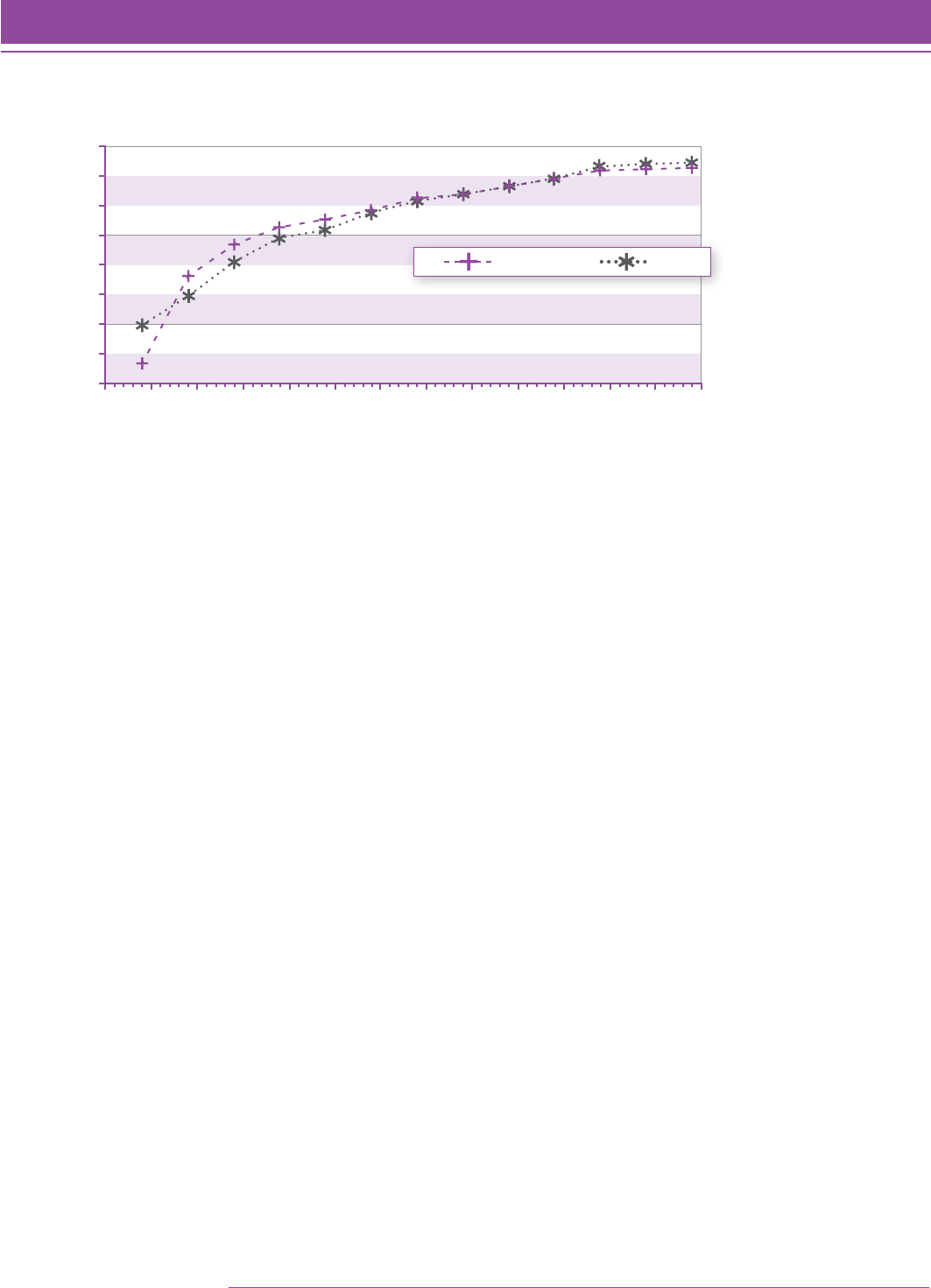

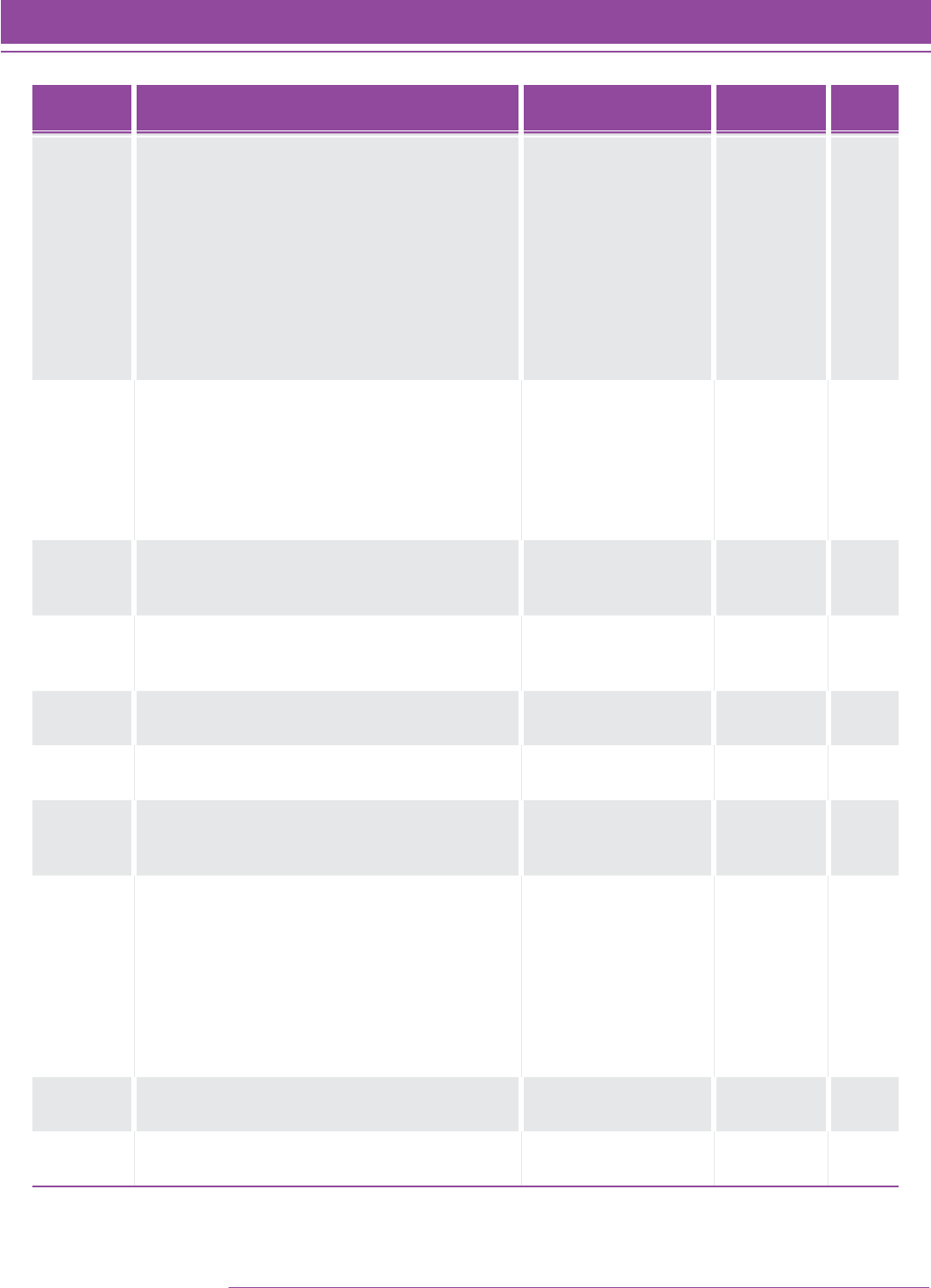

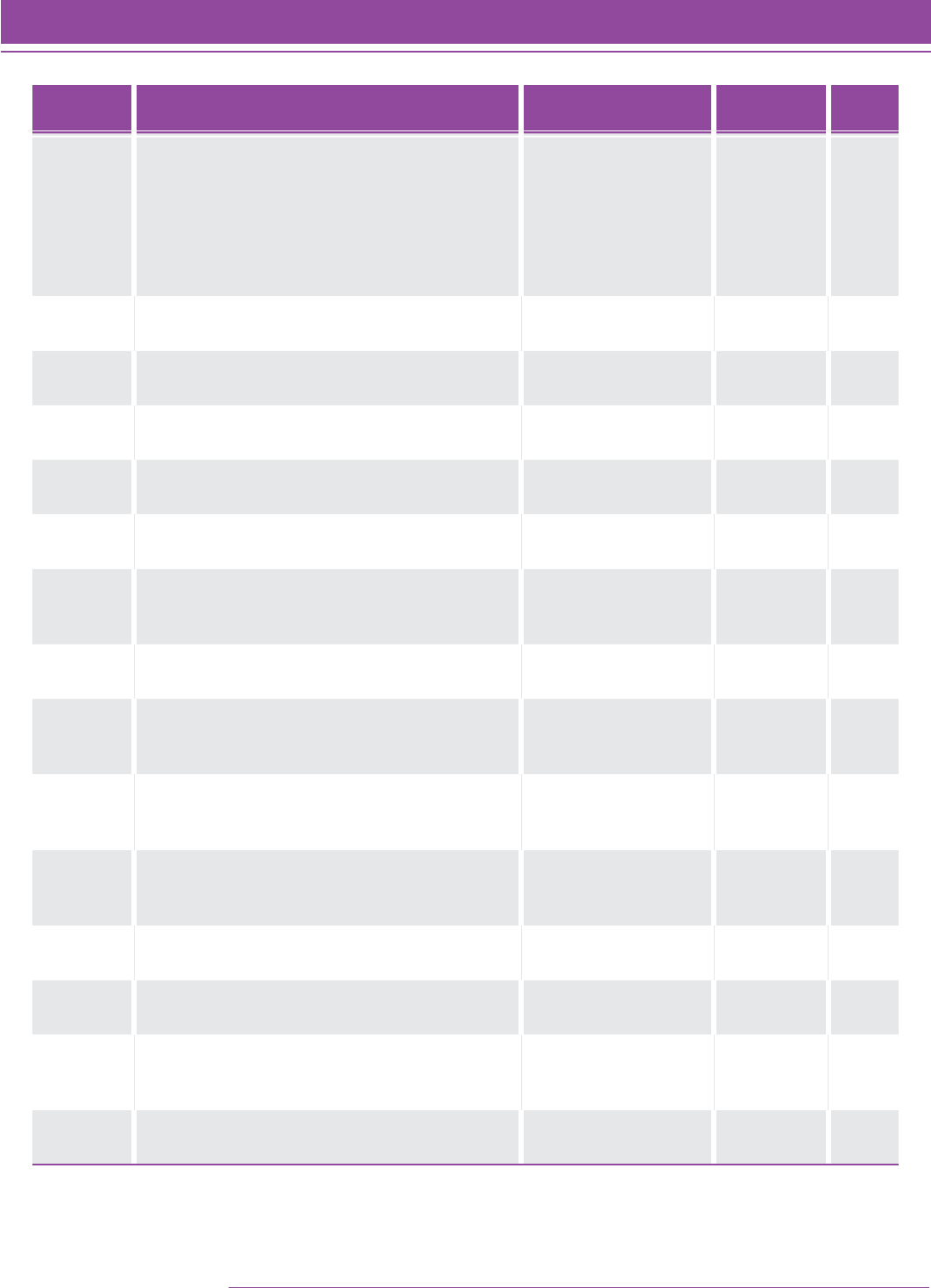

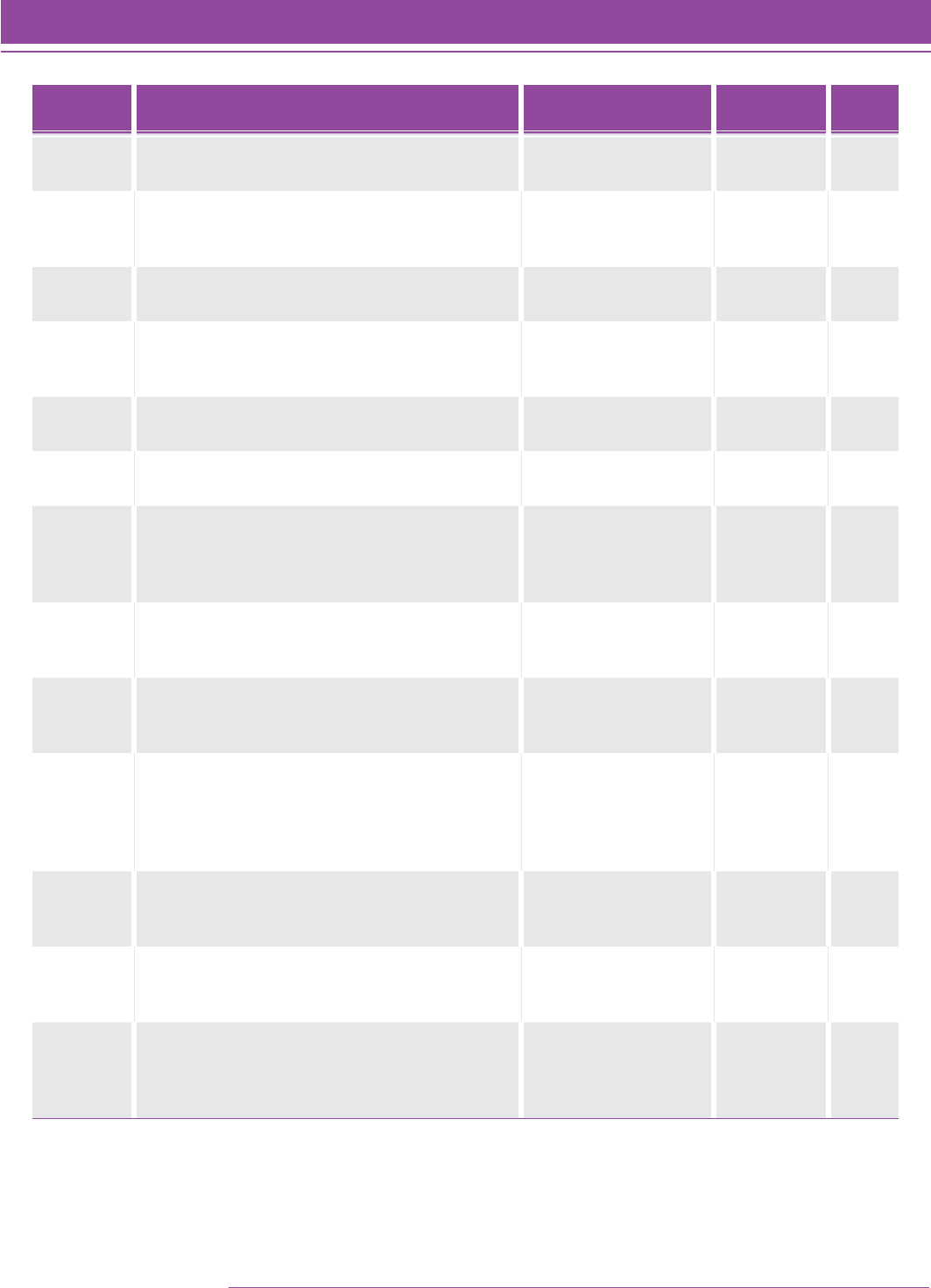

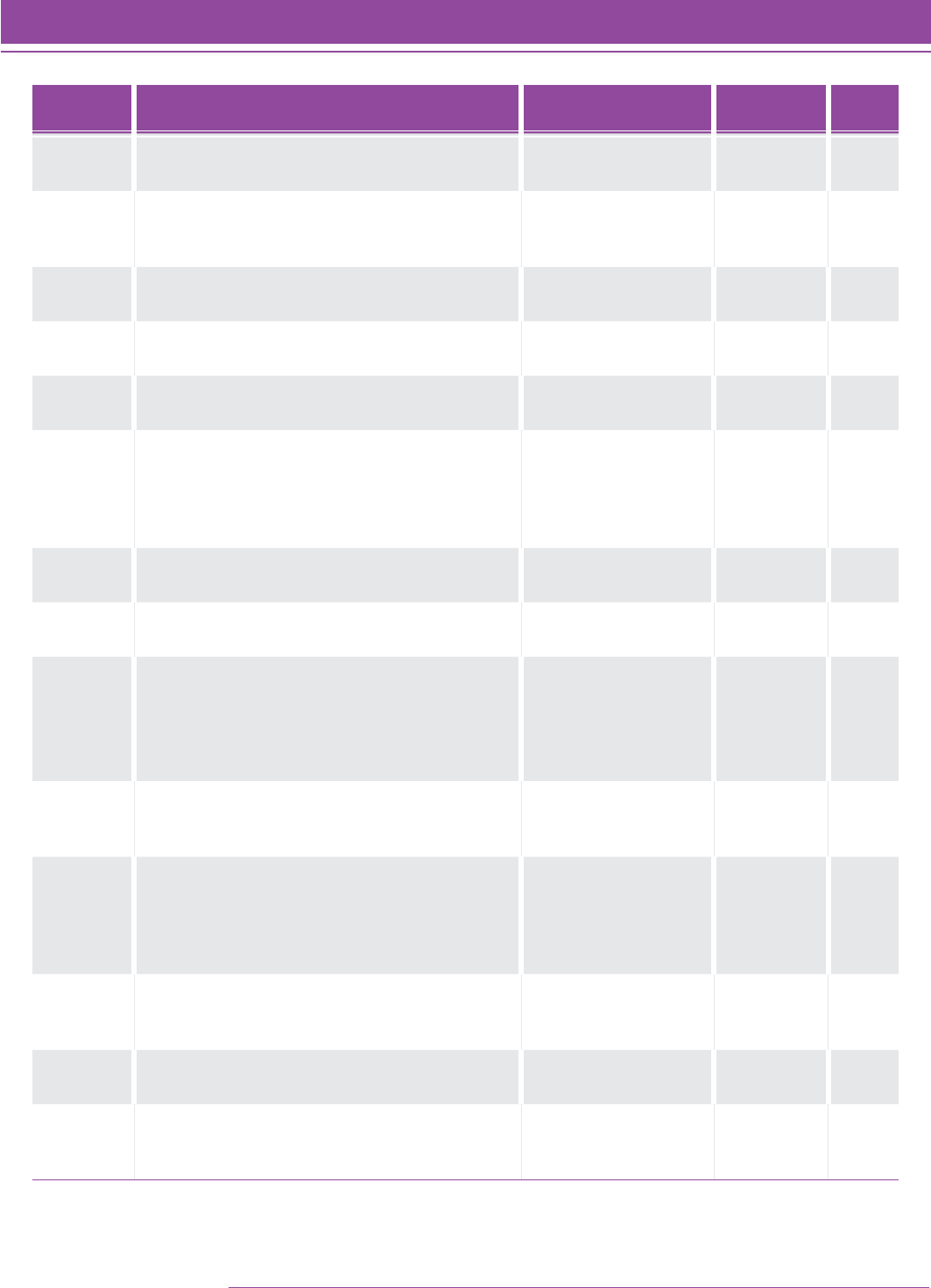

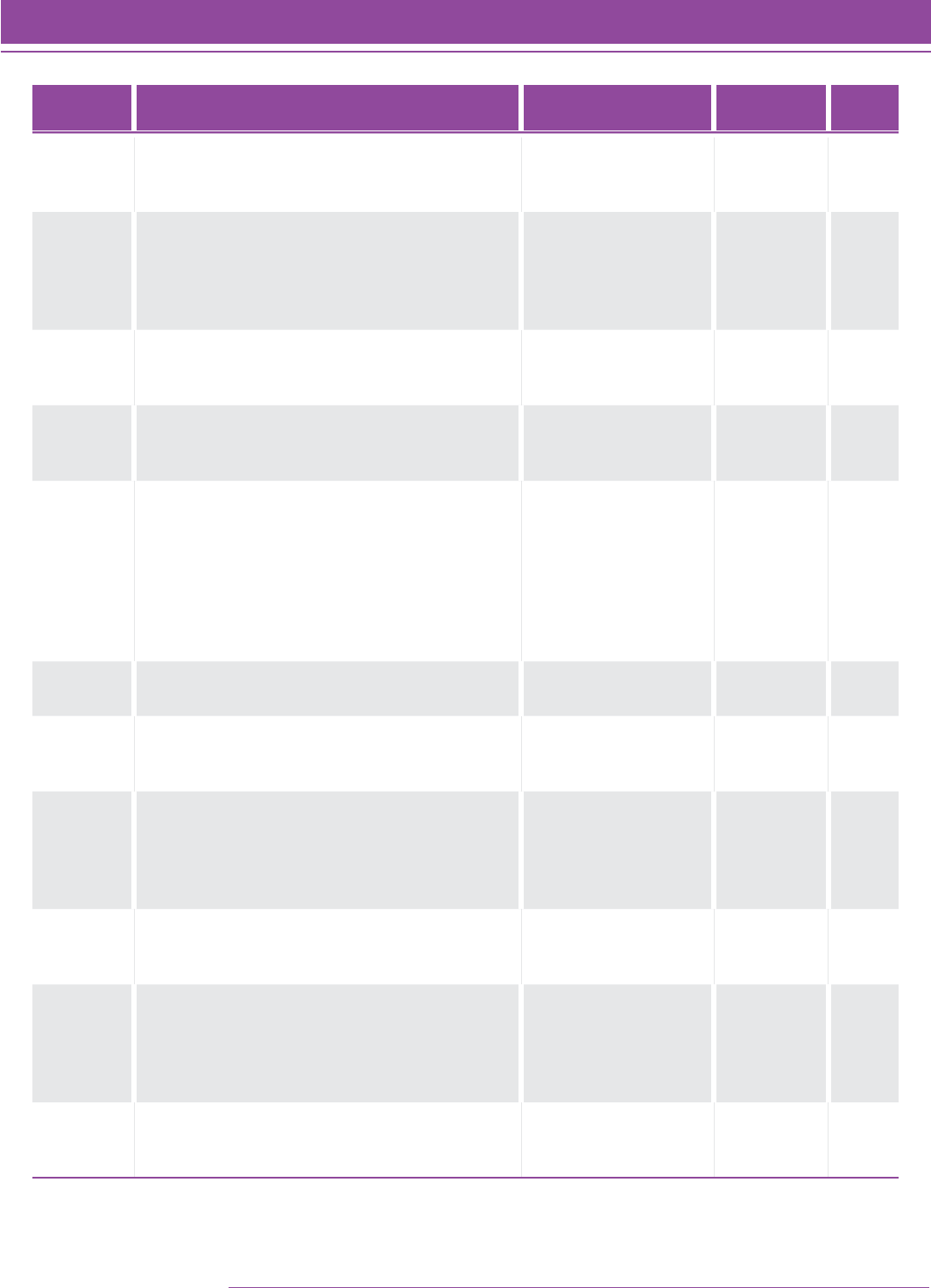

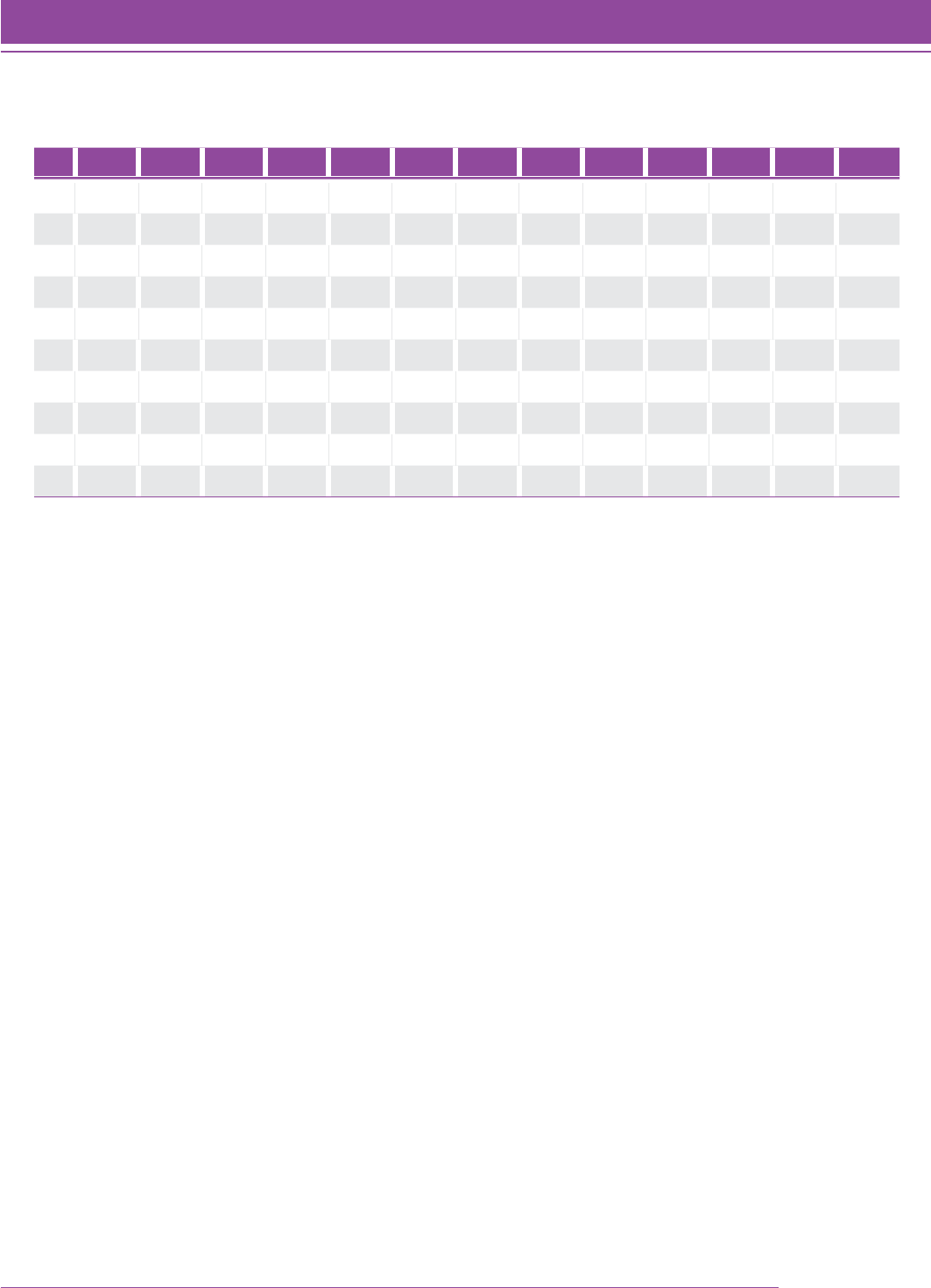

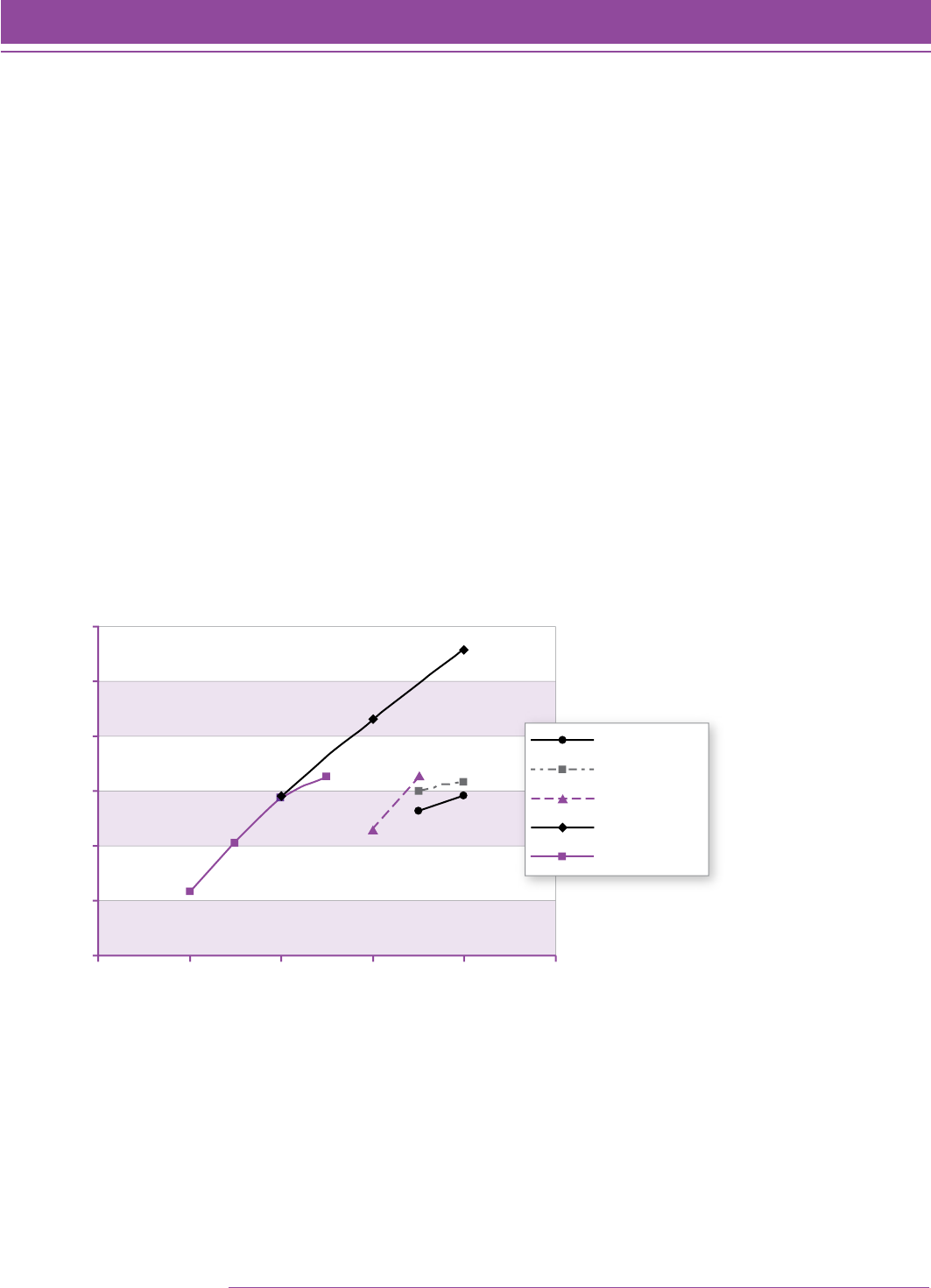

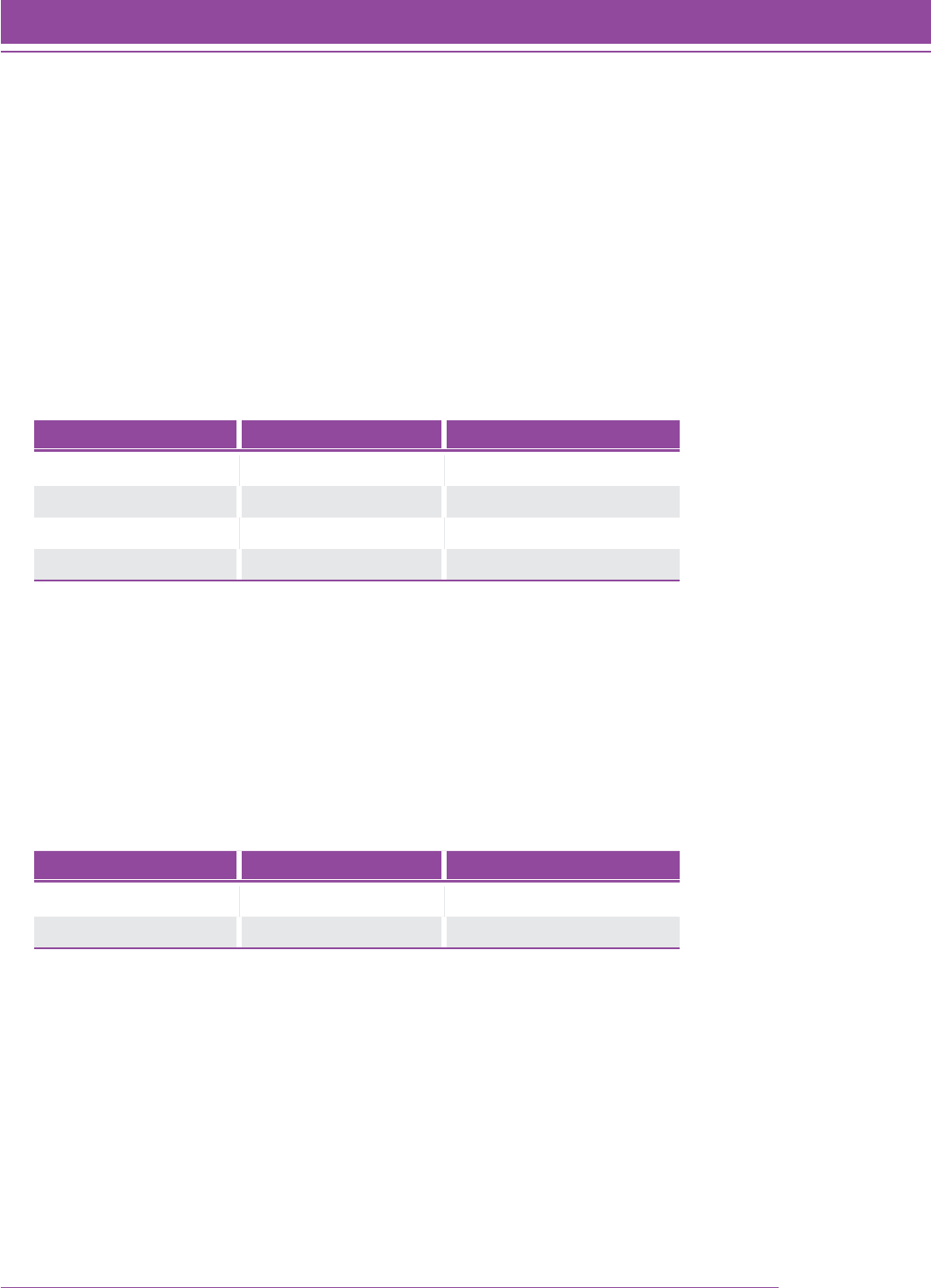

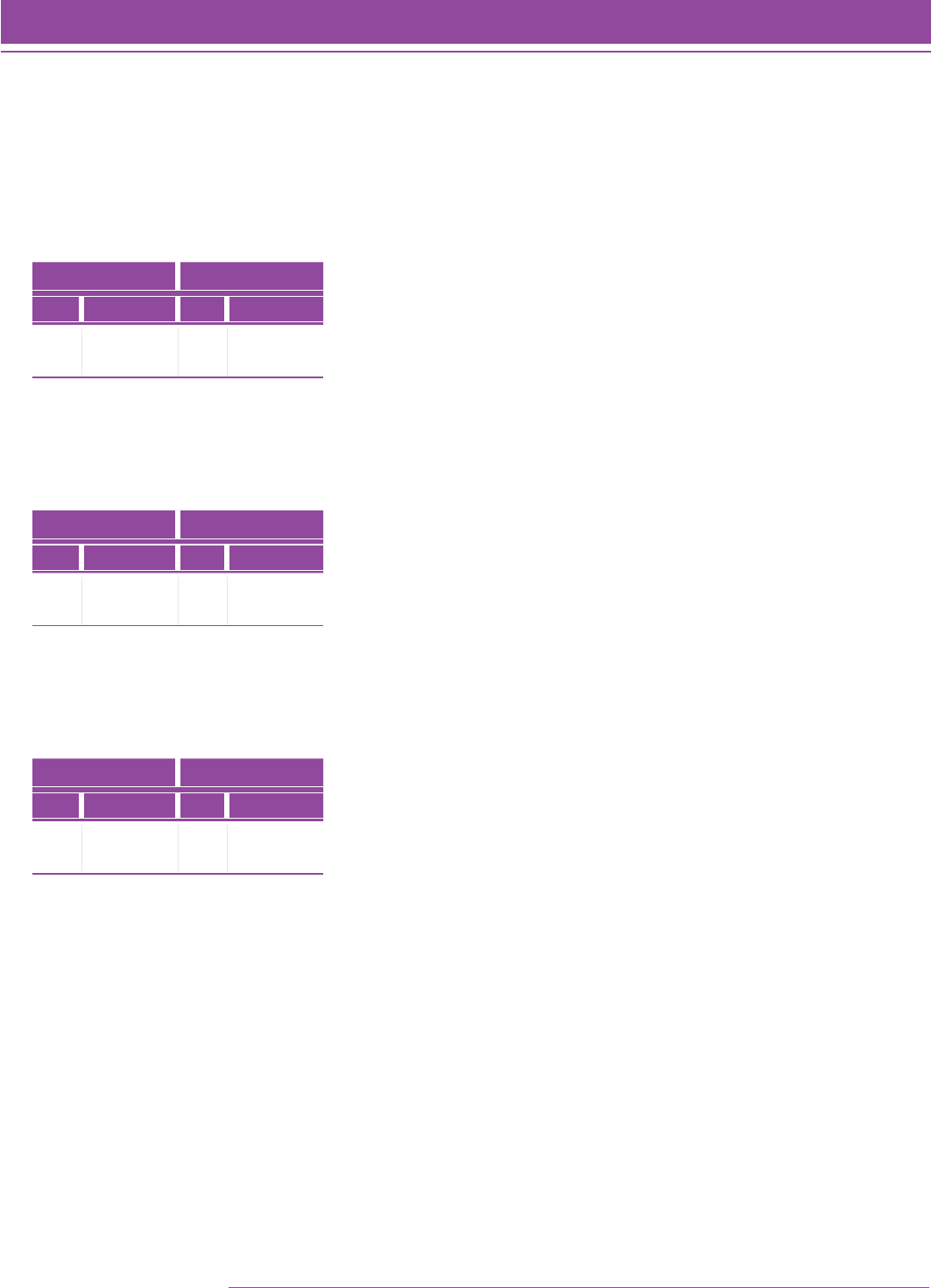

Figure 2 shows the relationship between grade level and Quantile measure. The box and whisker plot shows the

score progression from grade to grade (the x axis). Across all 9,656 students, the correlation between grade and

Quantile measure was 0.76 in this initial filed study.

FIGURE 2. Rasch achievement estimates of N 5 9,656 students with complete data.

Quantile Measure

2500

2400

2300

2200

2100

0

100

200

300

400

500

600

700

800

900

1000

1100

1200

1300

1400

1500

1600

1700

1800

1900

2000

Grade Distribution

2 3 4 5 6 7 8 9 10 11 12

SMI_TG_028

All students with outfit mean square statistics greater than or equal to 1.8 were removed from further analyses. A

total of 480 students (4.97%) were removed from further analyses. The number of students removed ranged from

8.47% (108) in Grade 2 to 2.29% (22) in Grade 6 with a mean percent decrease of 4.45% per grade.

All remaining students (9,176) and all items were analyzed with Winsteps using a logit convergence criterion of

0.0001 and a residual convergence criterion of 0.001. Items that a student skipped were treated as missing, rather

than being treated as incorrect. Only students who responded to at least 20 items were included in the analyses.

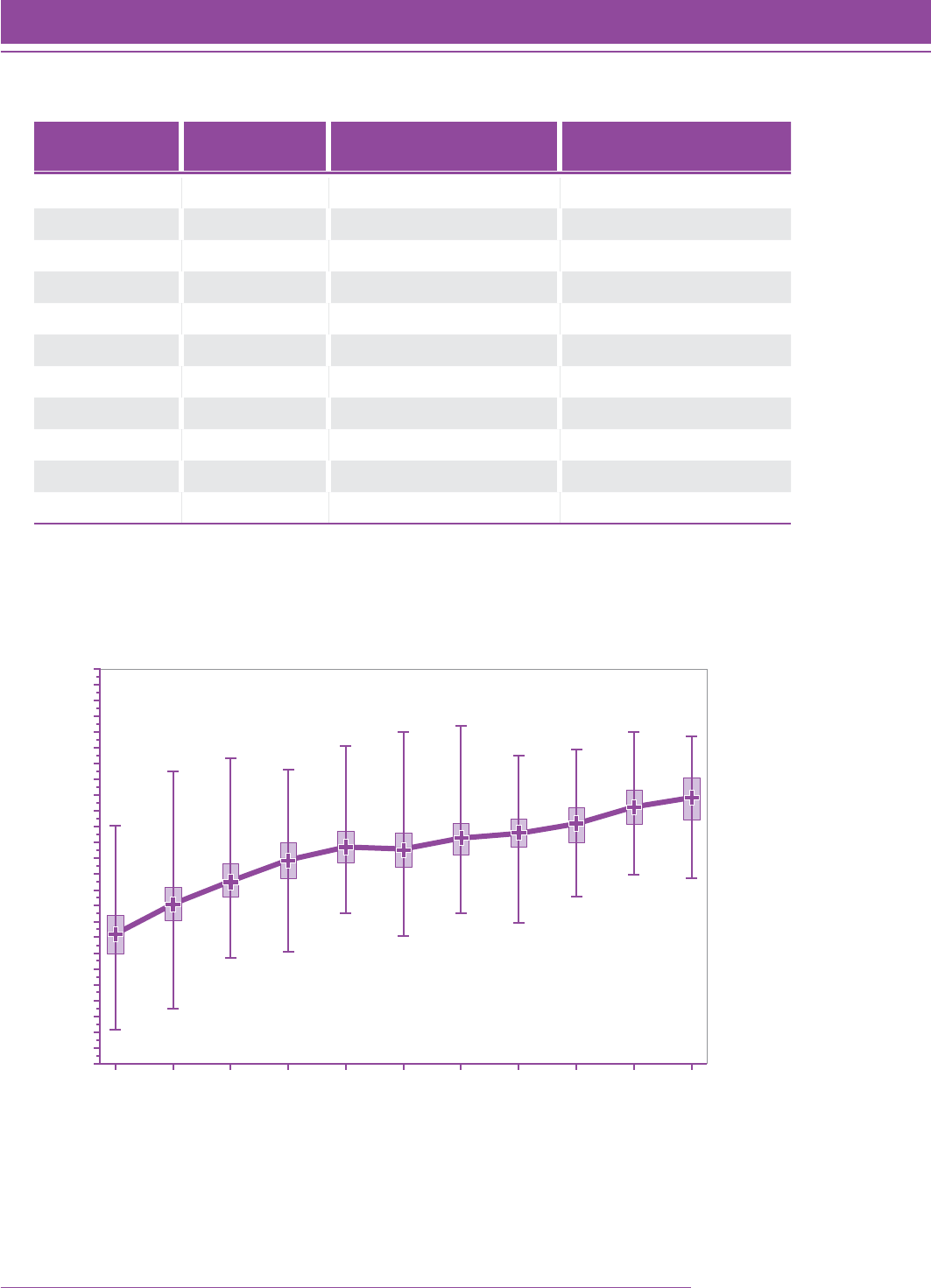

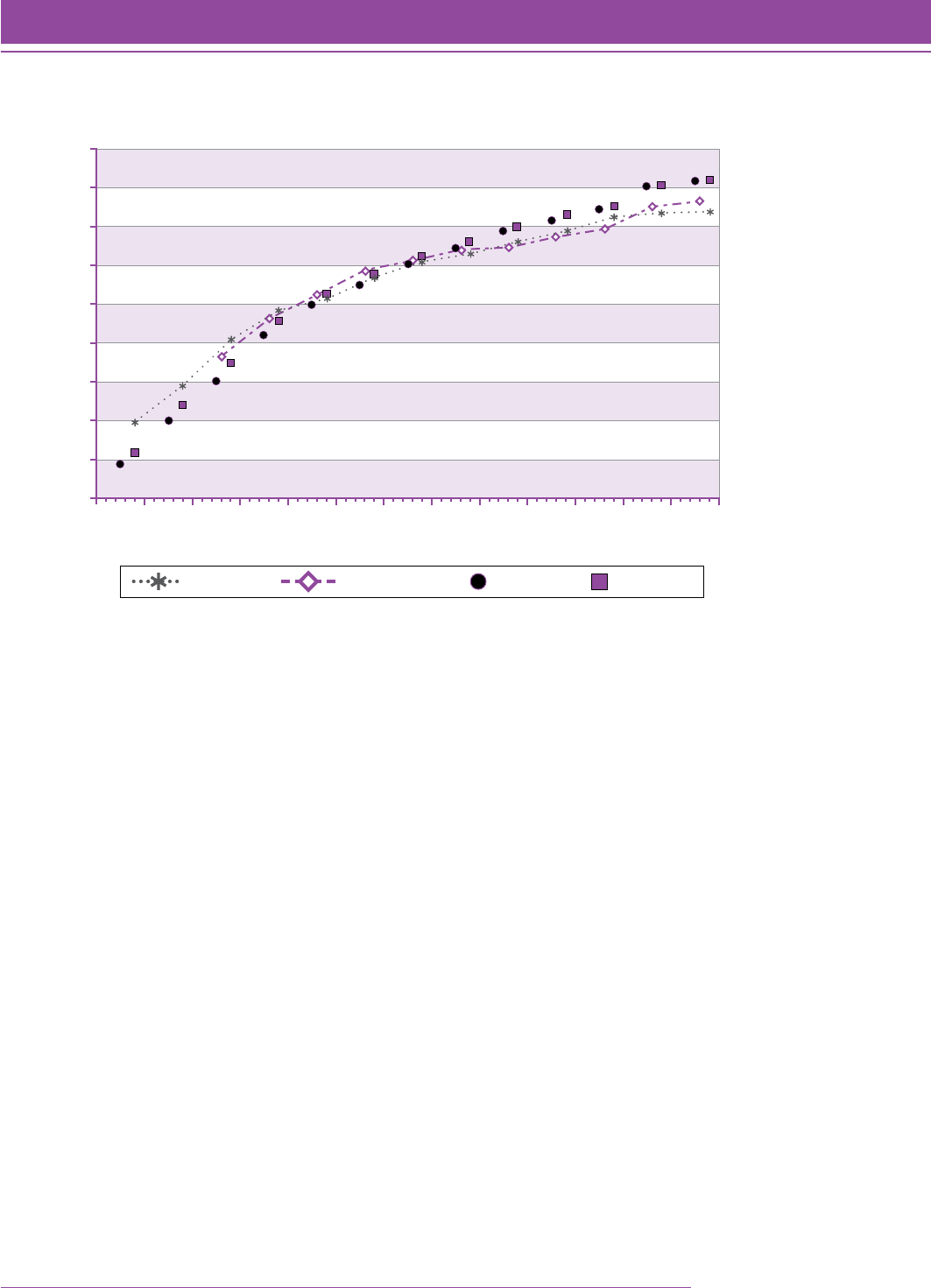

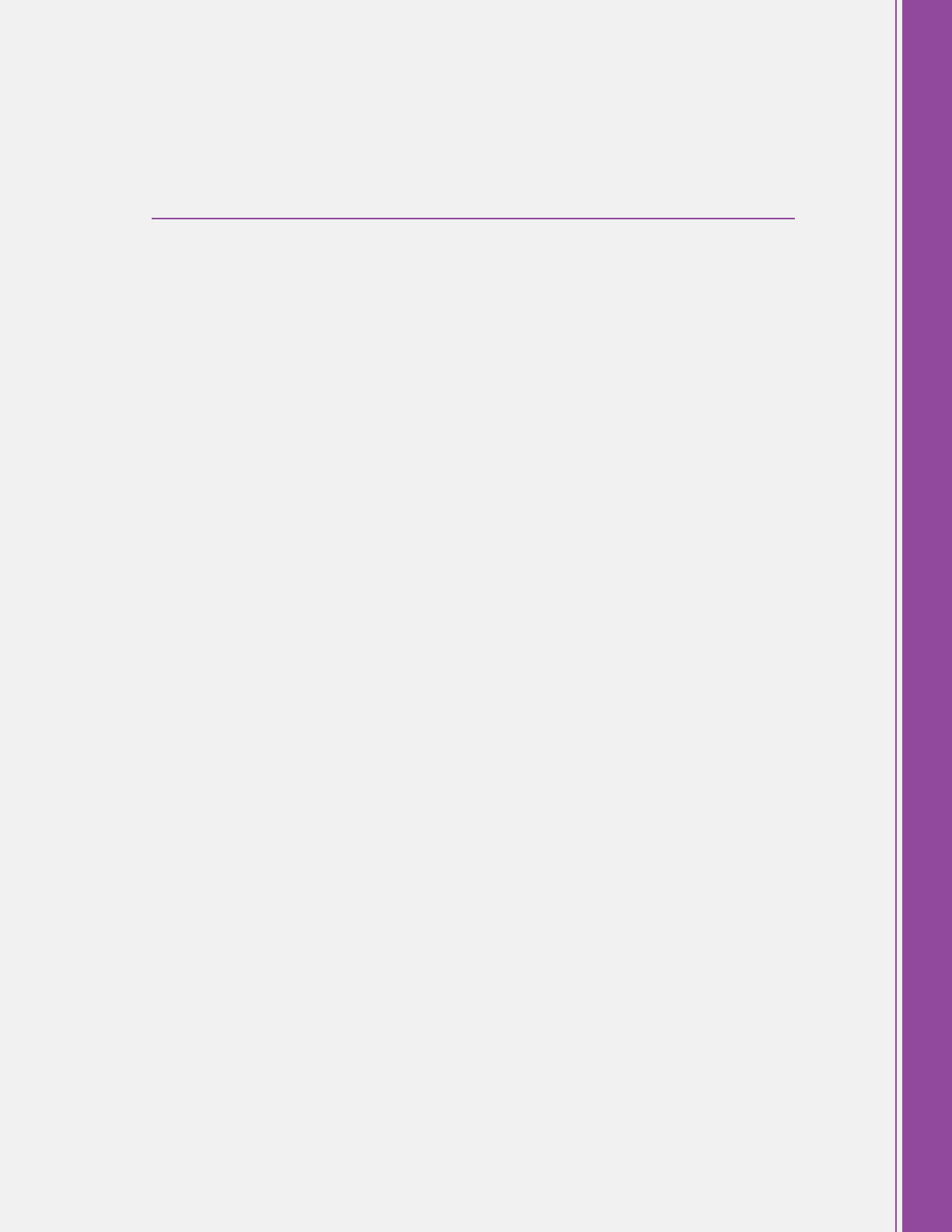

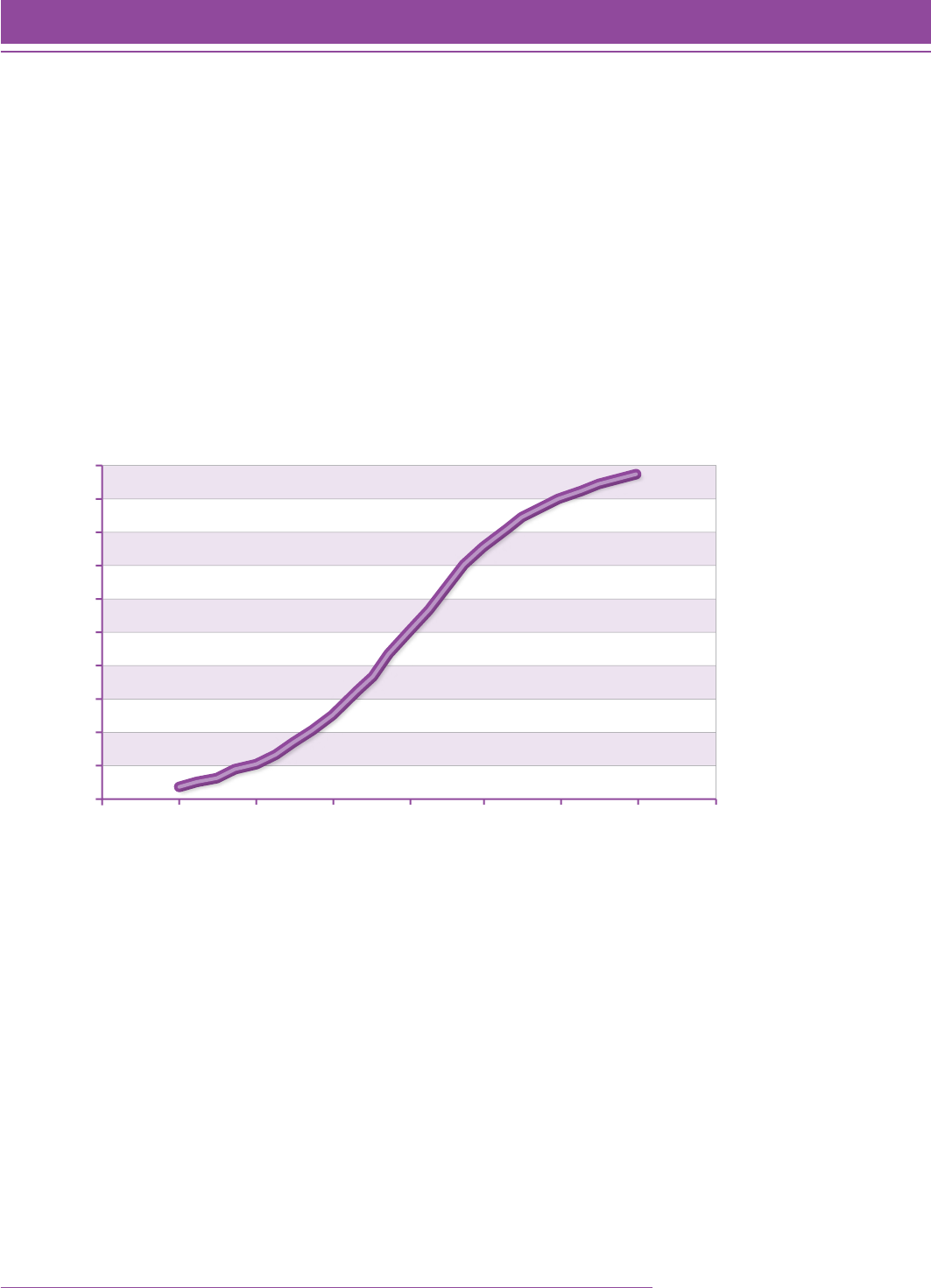

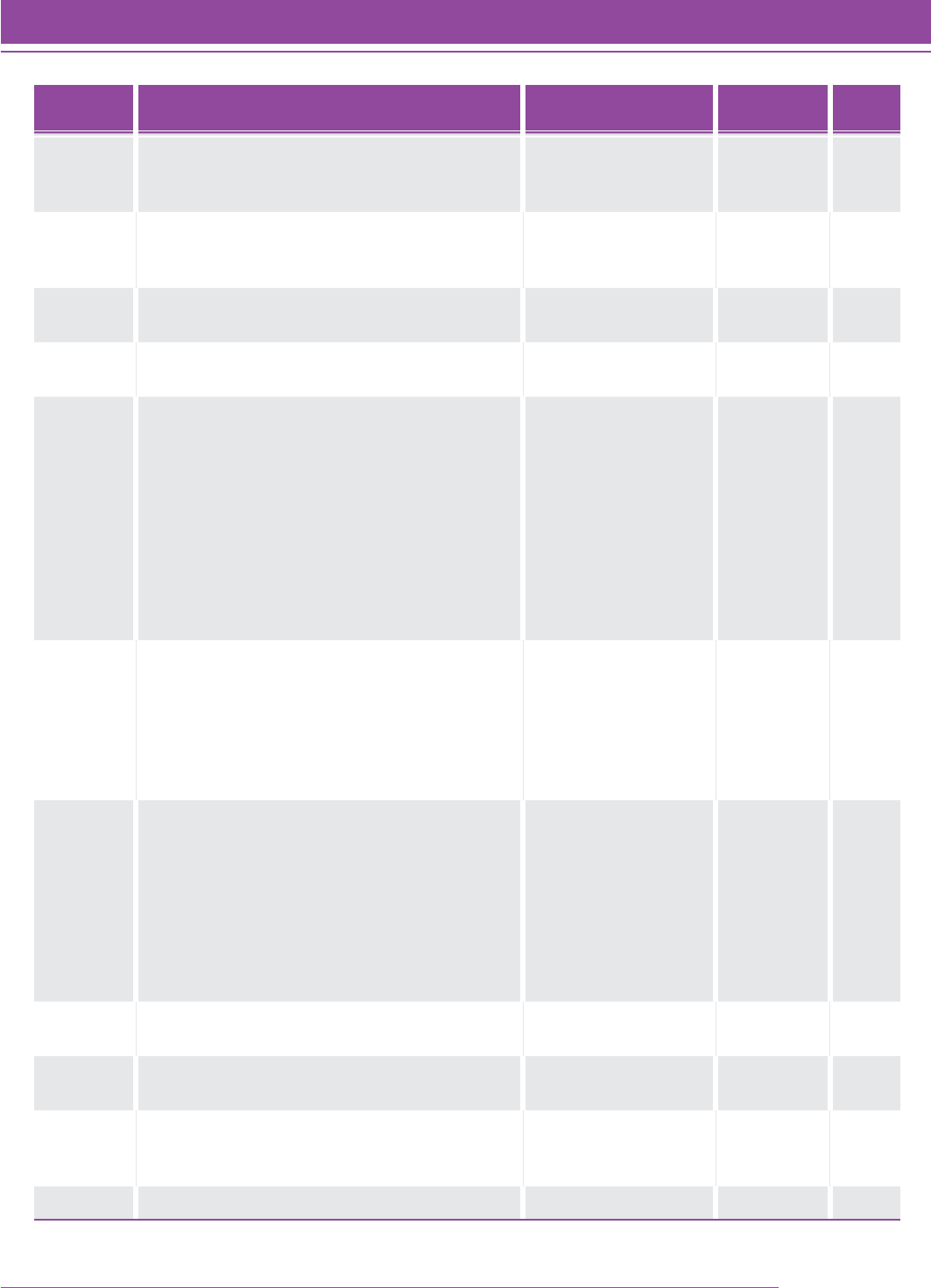

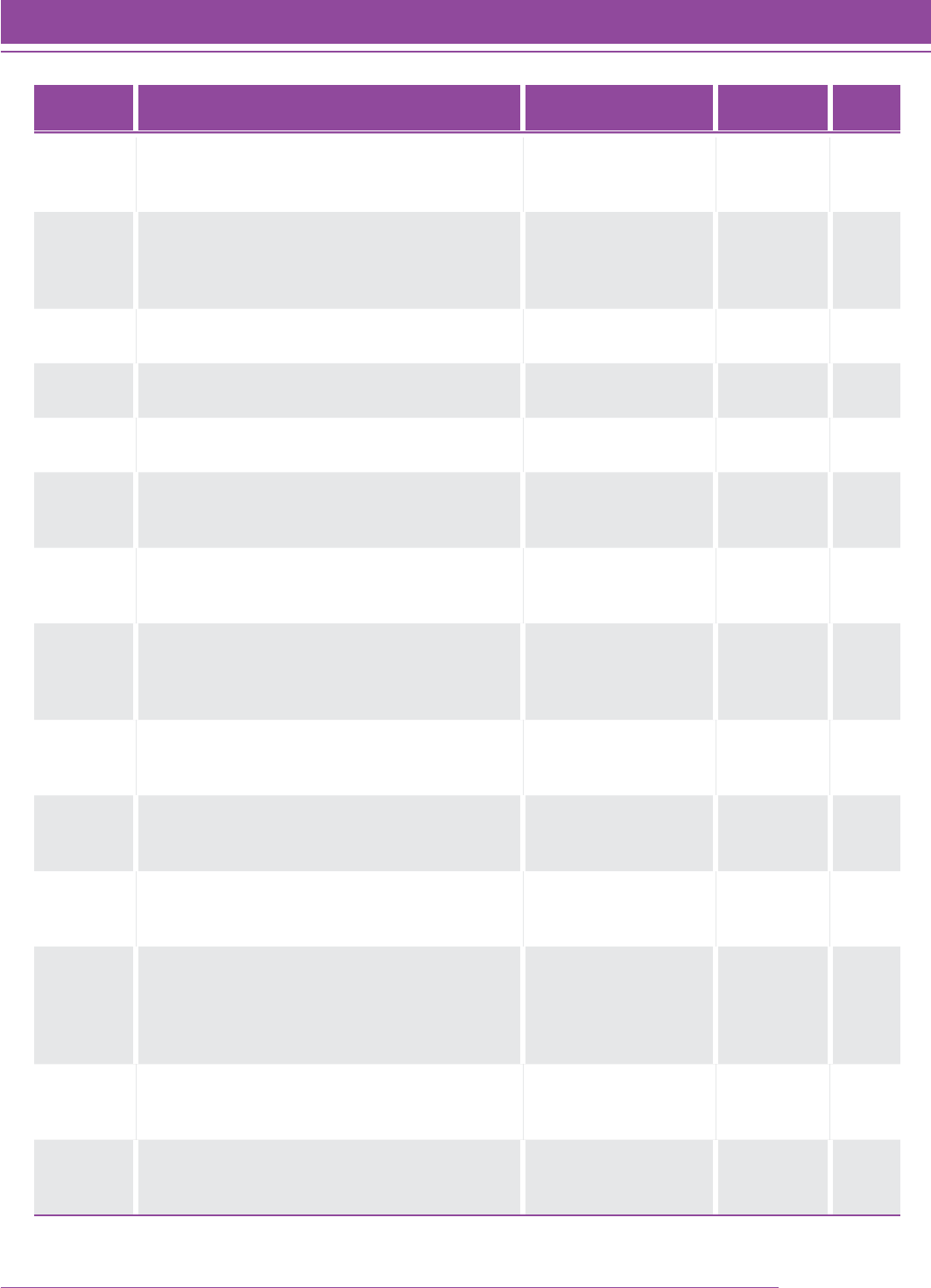

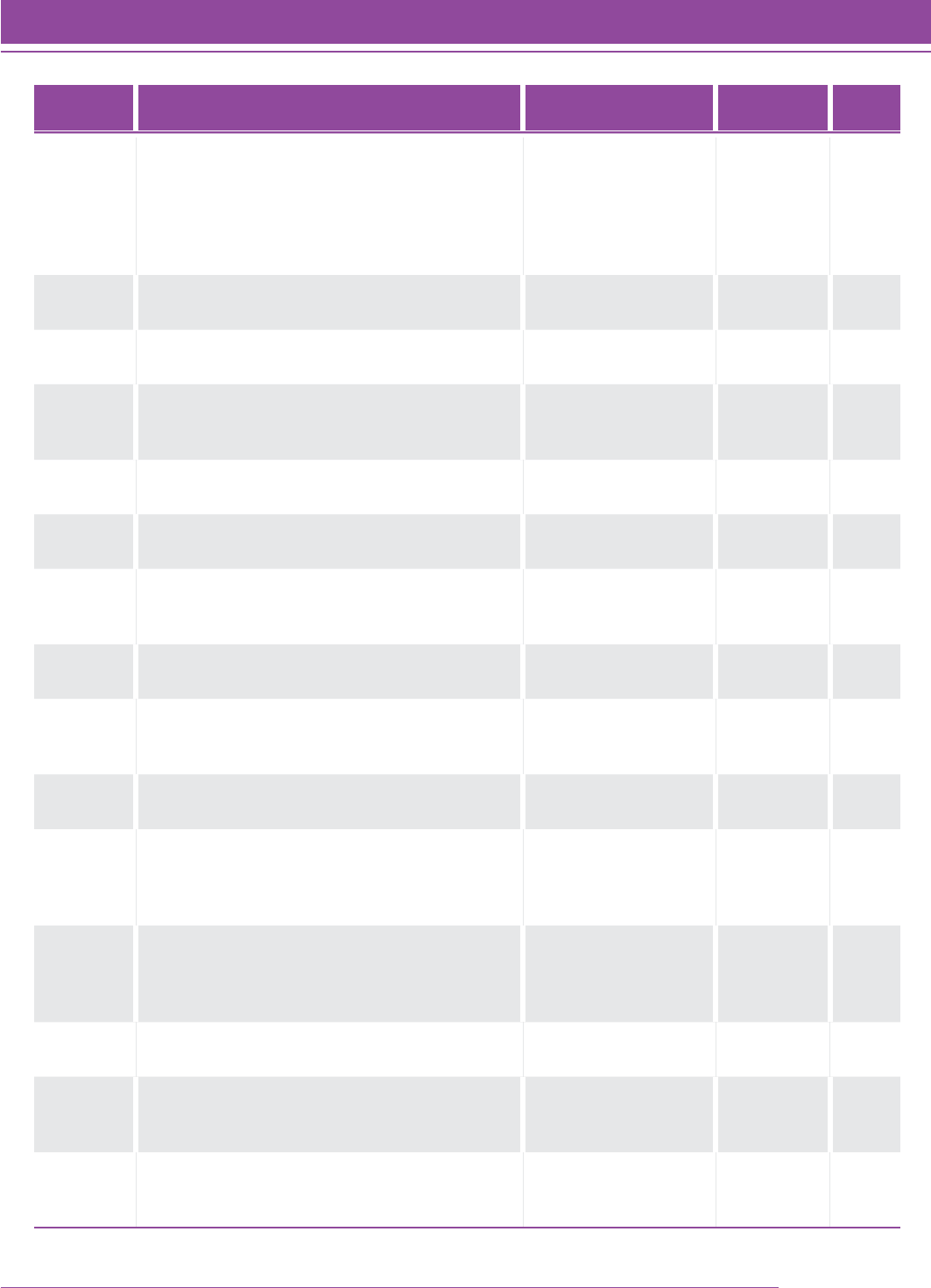

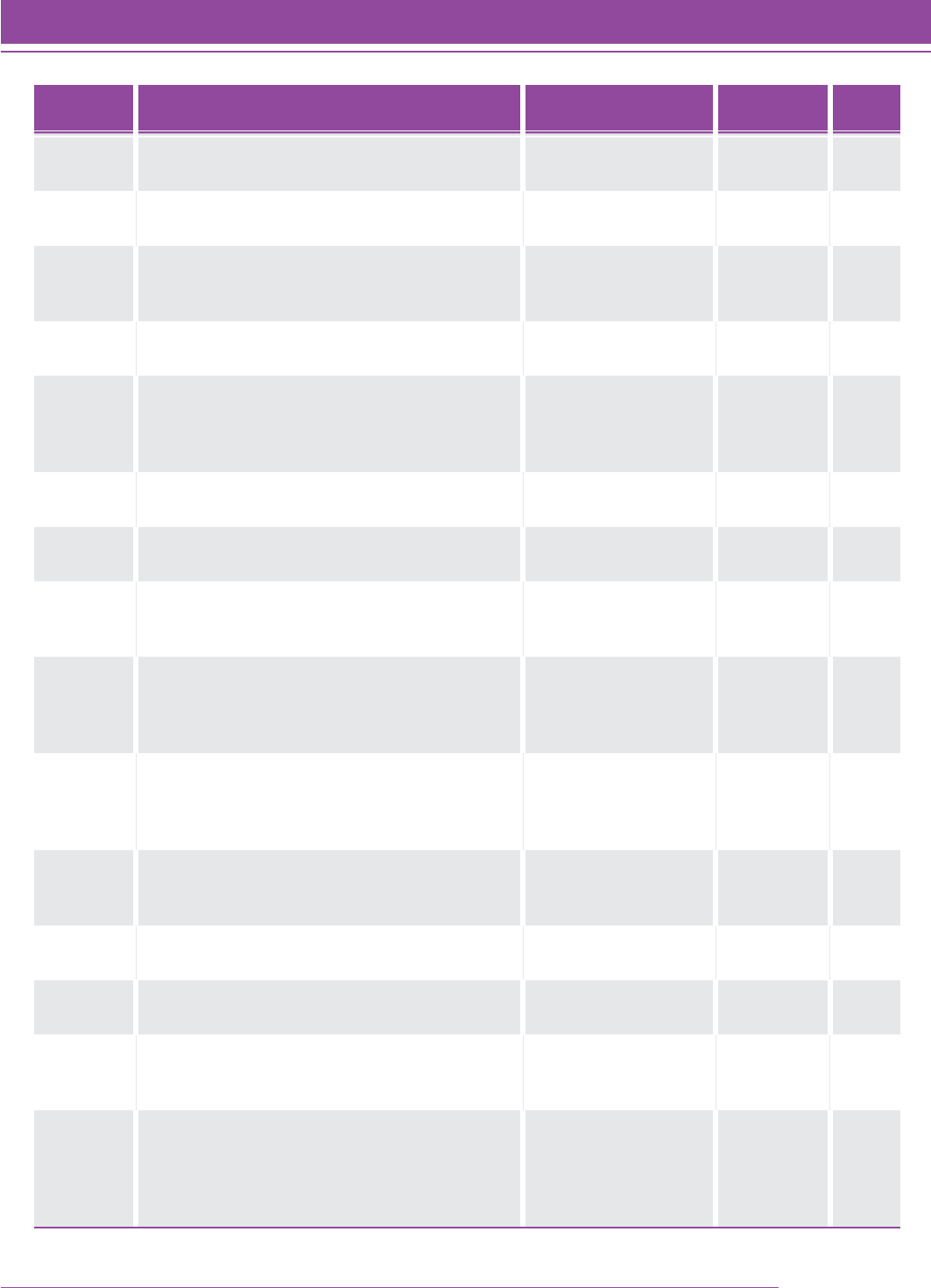

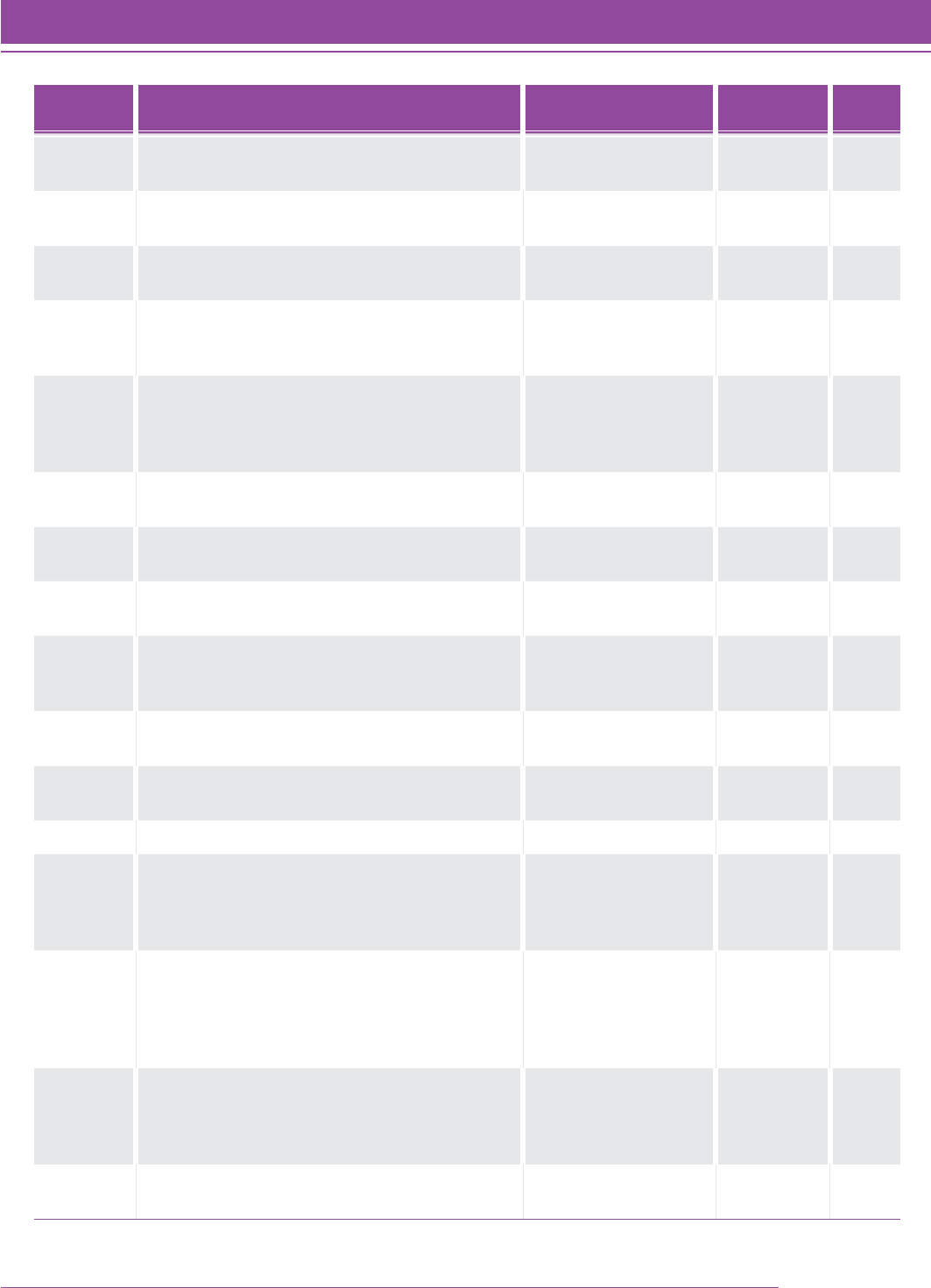

Table 5 shows the mean and median Quantile measures for the final set of students at each grade level. Figure 3

shows the results from the final set of students. The correlation between grade and Quantile measure is 0.78 for this

interim field study.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation 29

Theoretical Foundation

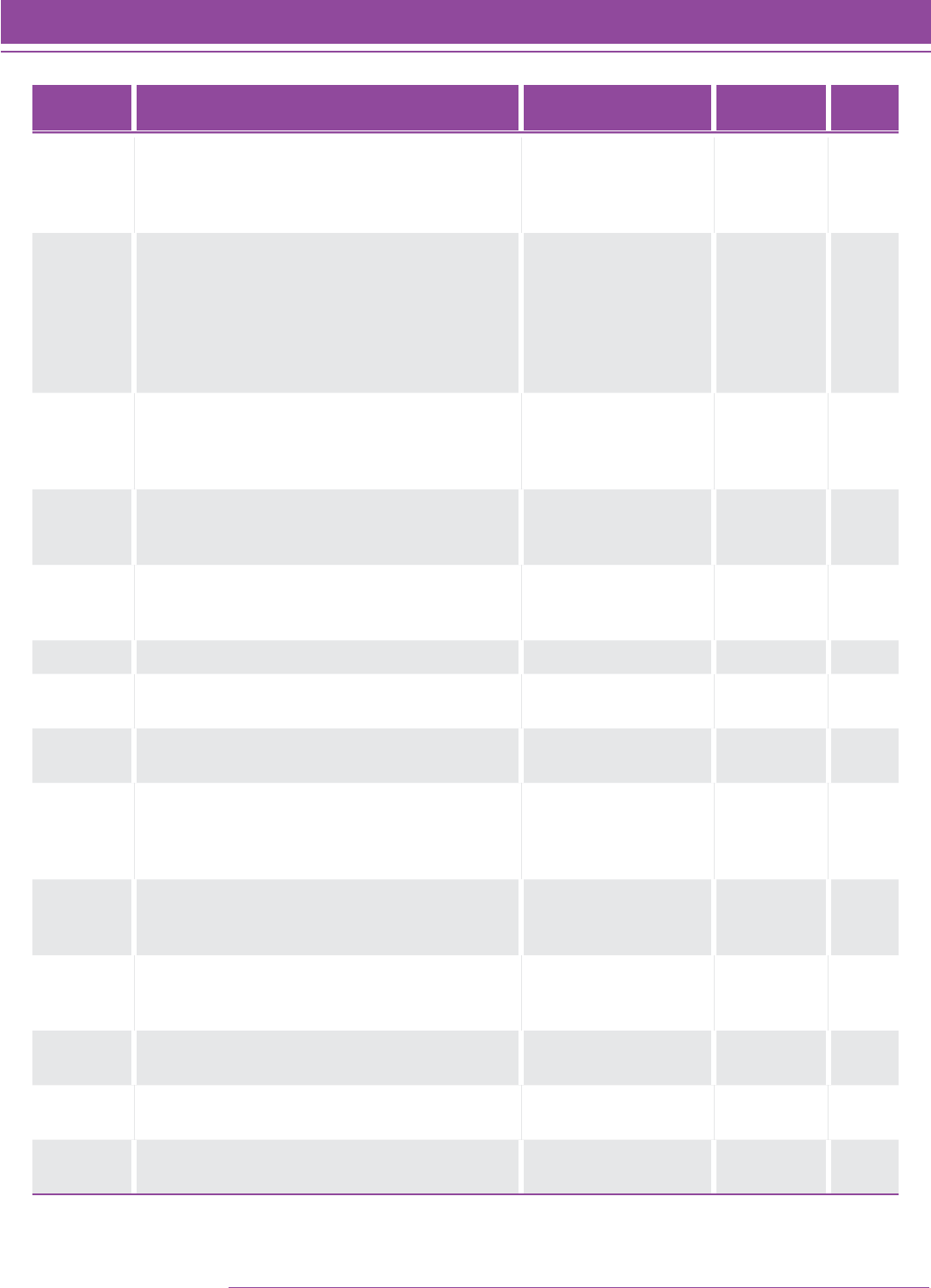

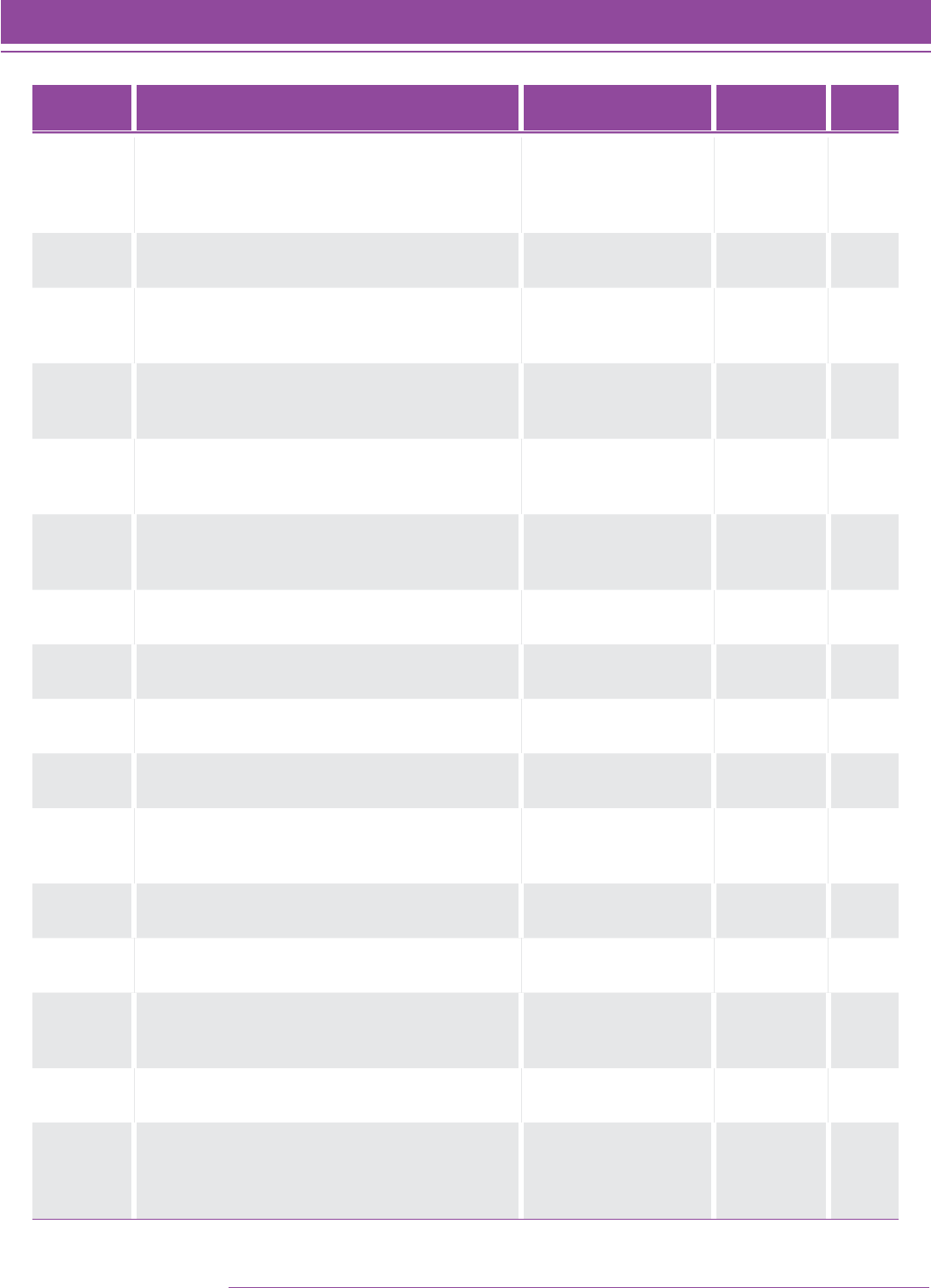

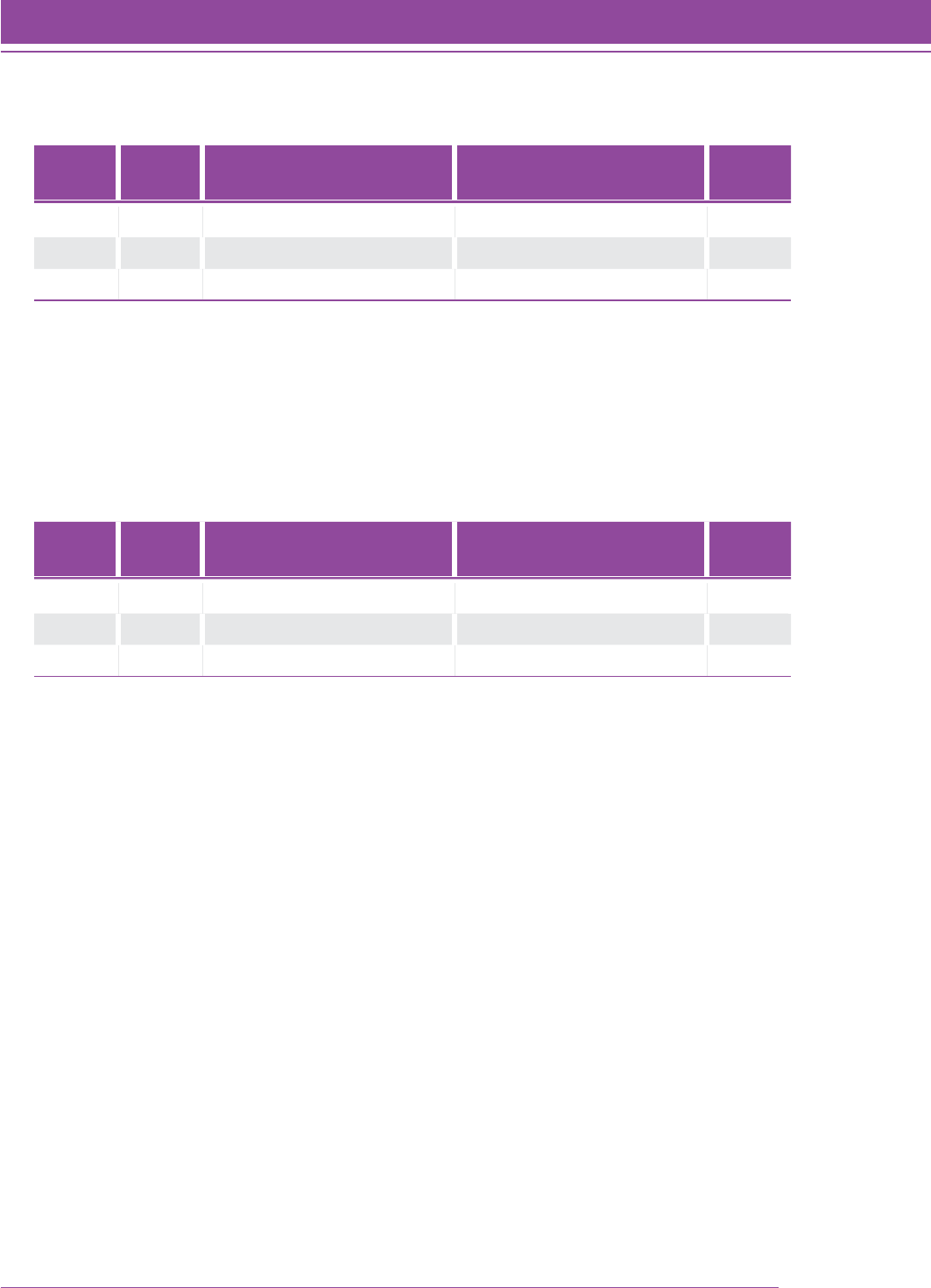

TABLE 5. Mean and median Quantile measure for the final set of N 5 9,176 students.

Grade Level NMedian Logit Value Mean (Median)

Quantile Measure

2 1,167 –2.800 289.03 (292)

3 1,260 –1.650 502.18 (499)

4 1,352 –0.780 652.60 (656)

5 1,289 0.000 795.25 (796)

6 937 0.430 880.77 (874)

7 1,181 0.370 877.75 (863)

8 955 0.810 951.41 (942)

9 466 1.020 982.62 (980)

10 244 1.400 1044.08 (1048)

11 191 2.070 1160.49 (1169)

12 134 2.295 1219.87 (1210)

FIGURE 3. Box and whisker plot of the Rasch ability estimates (using the Quantile scale) for

the final sample of students with outfit statistics less than 1.8 (N 5 9,176).

SMI_TG_029

Grade Distribution

2 3 4 5 6 7 8 9 10 11 12

Quantile Measure

2500

2400

2300

2200

2100

0

100

200

300

400

500

600

700

800

900

1000

1100

1200

1300

1400

1500

1600

1700

1800

1900

2000

30 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation

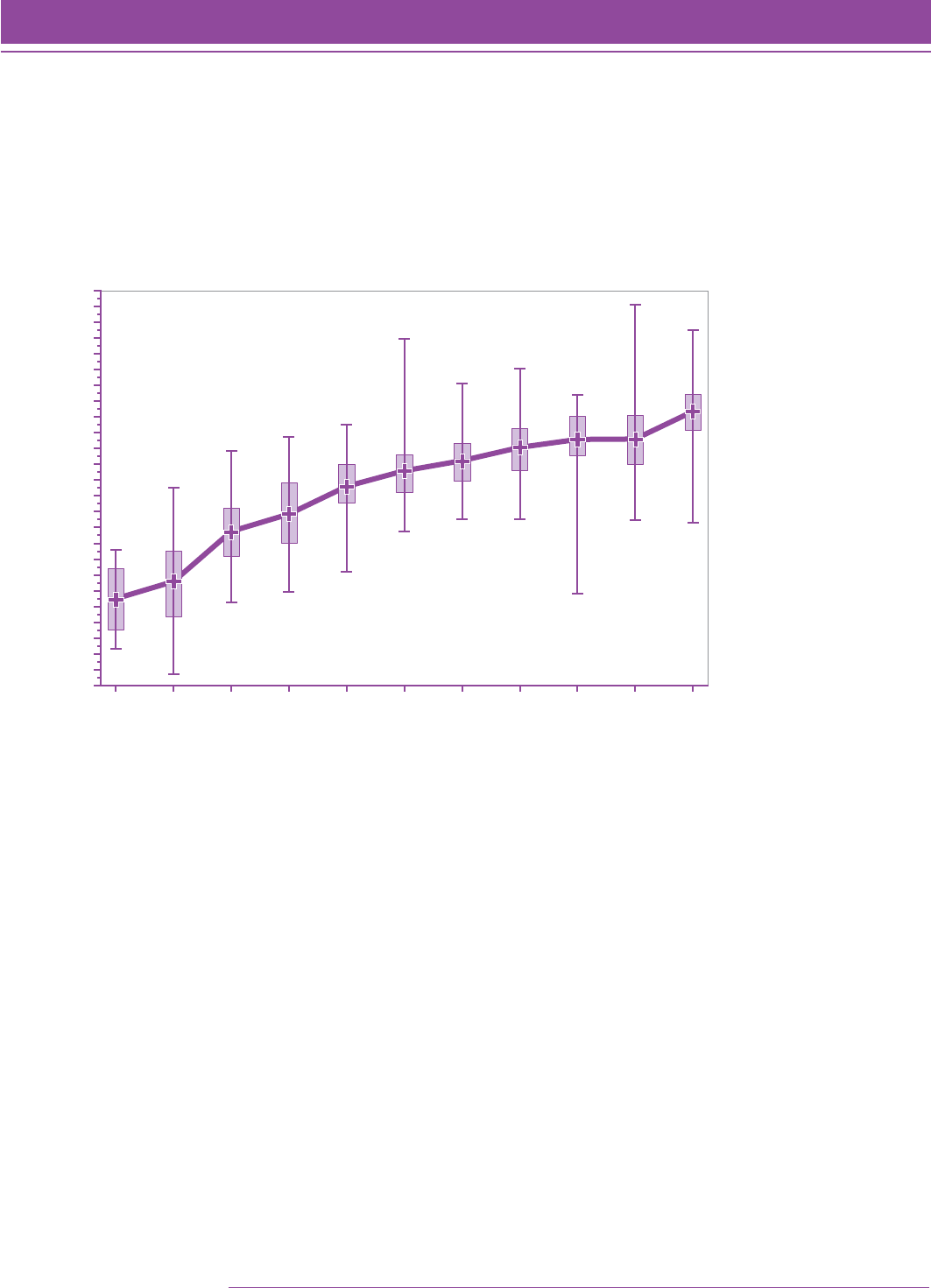

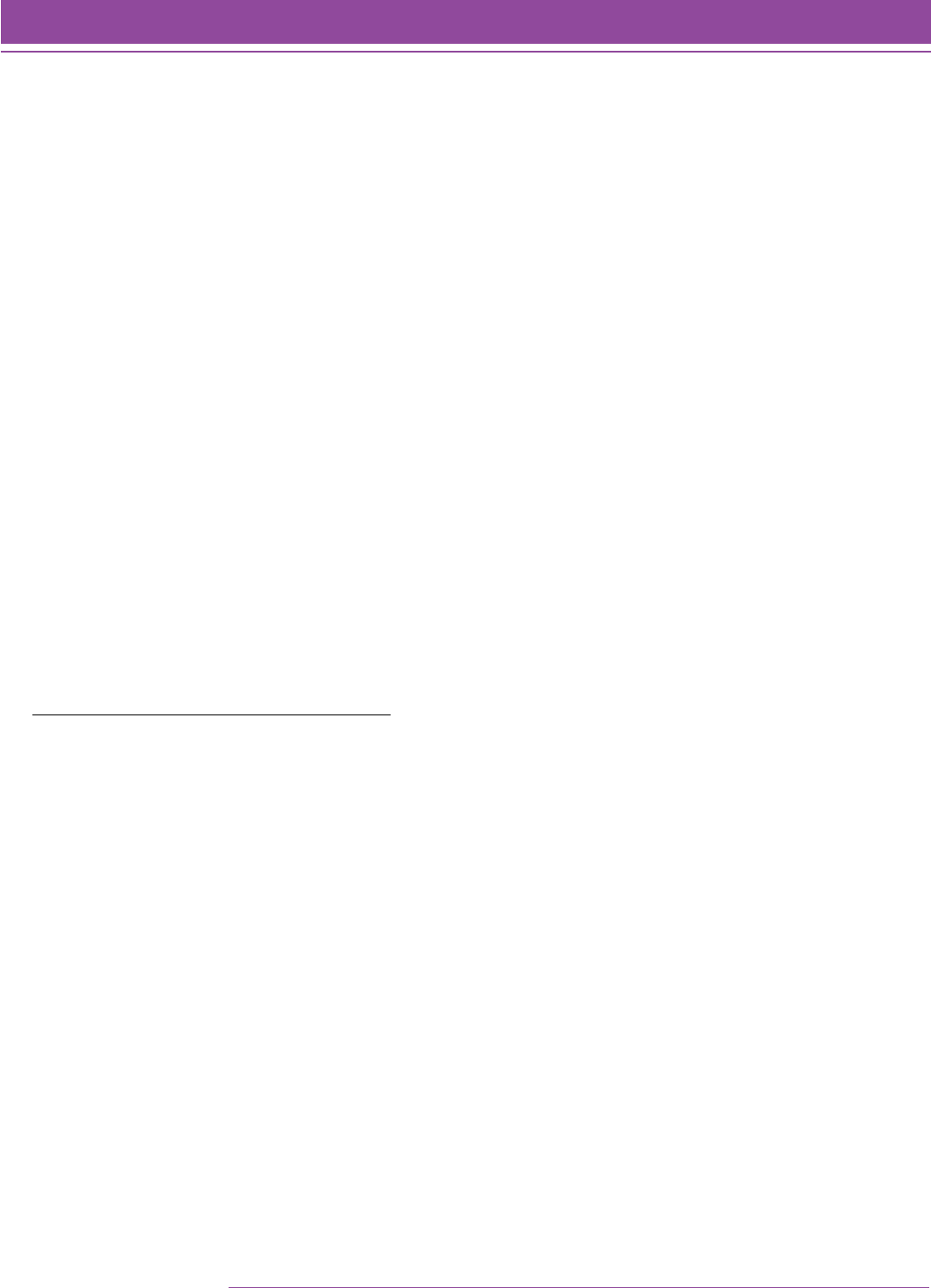

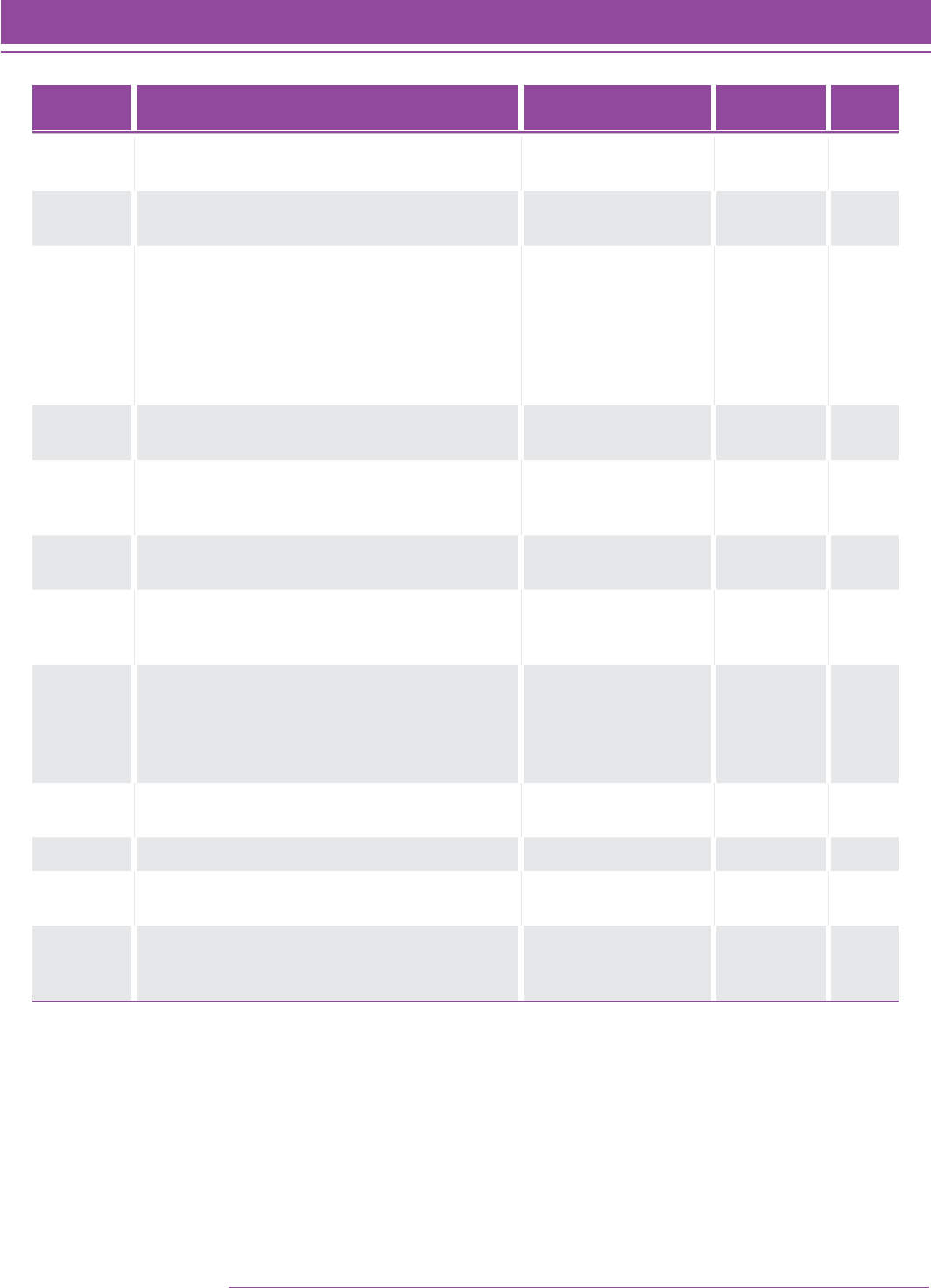

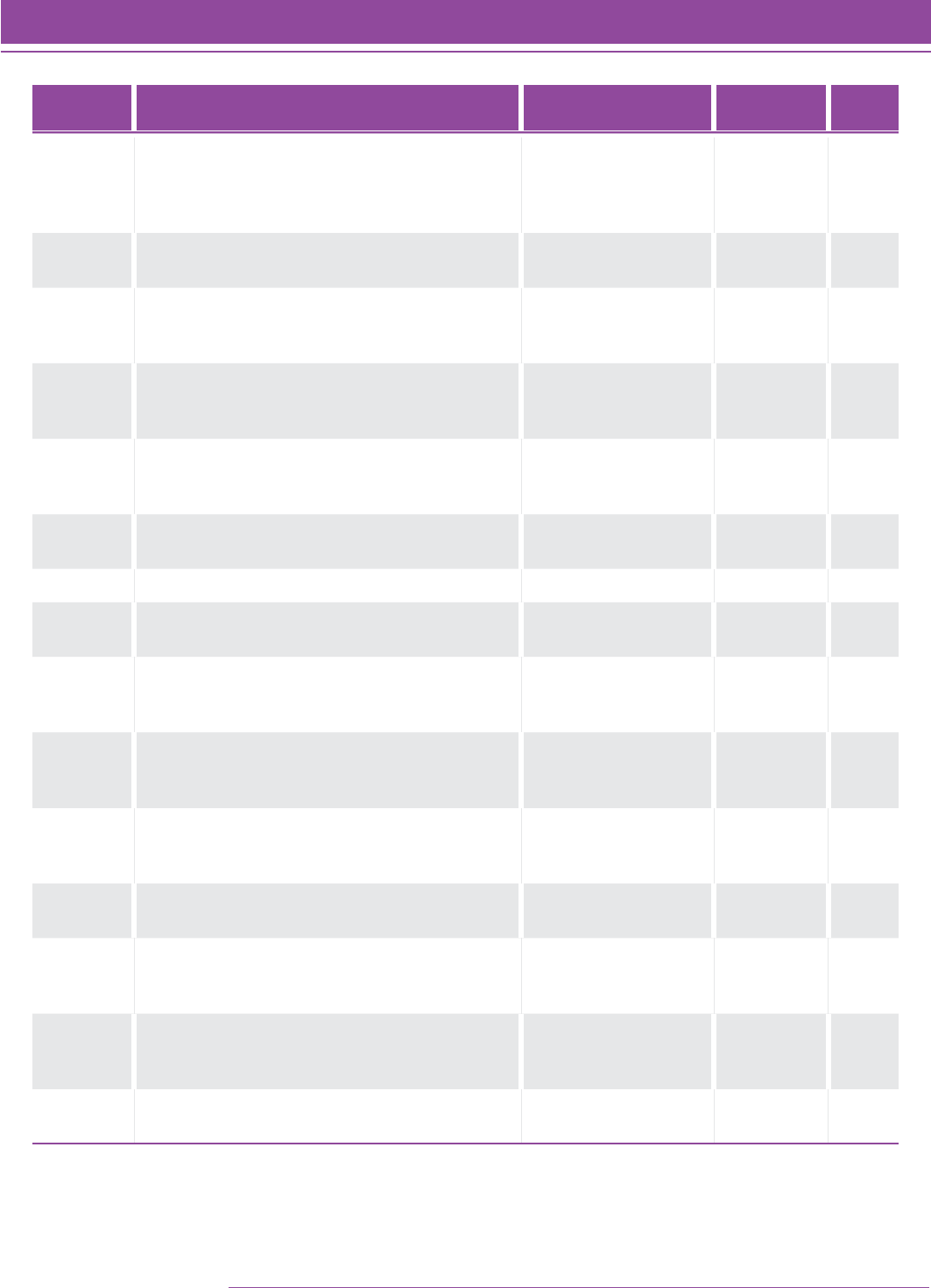

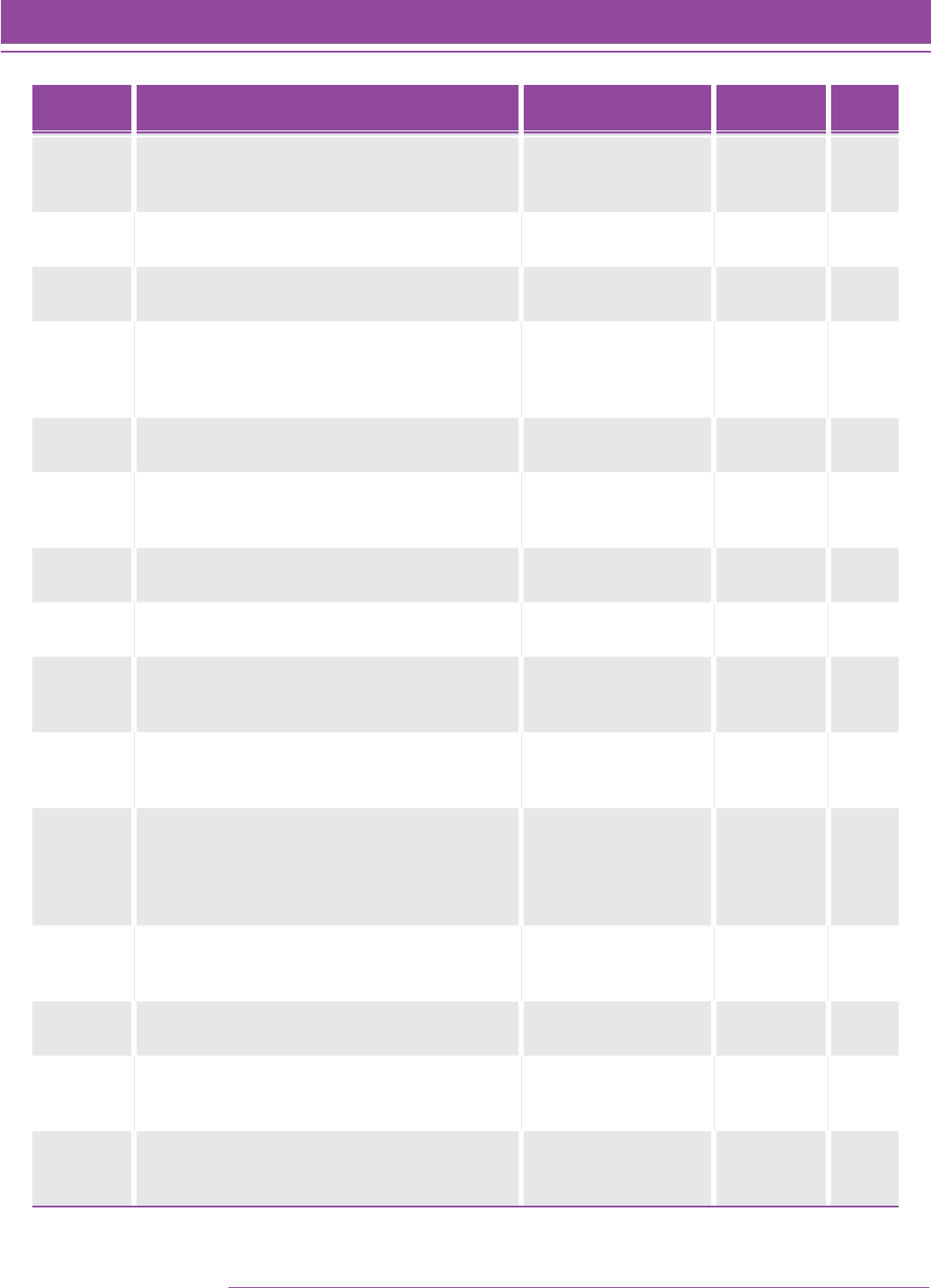

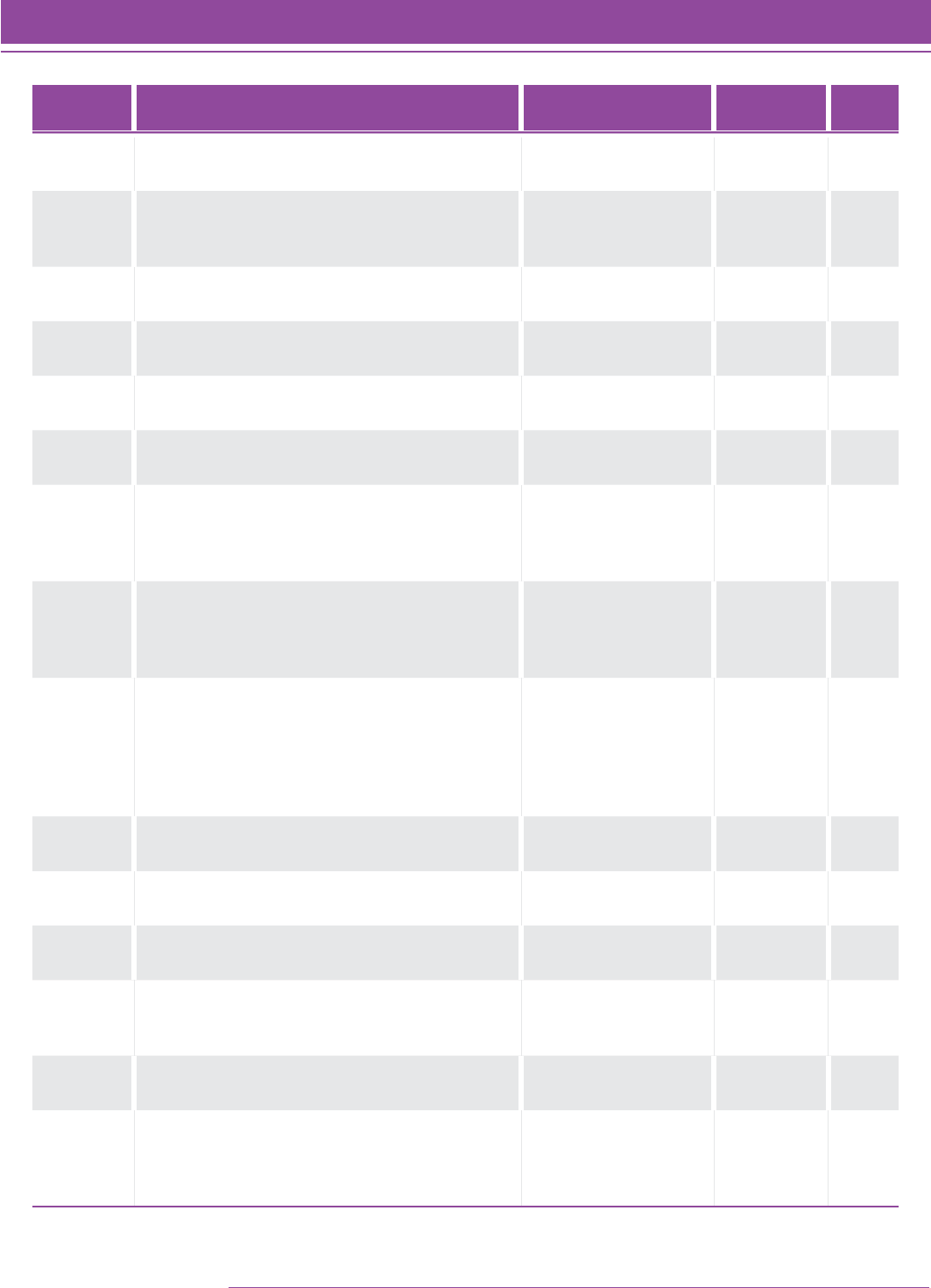

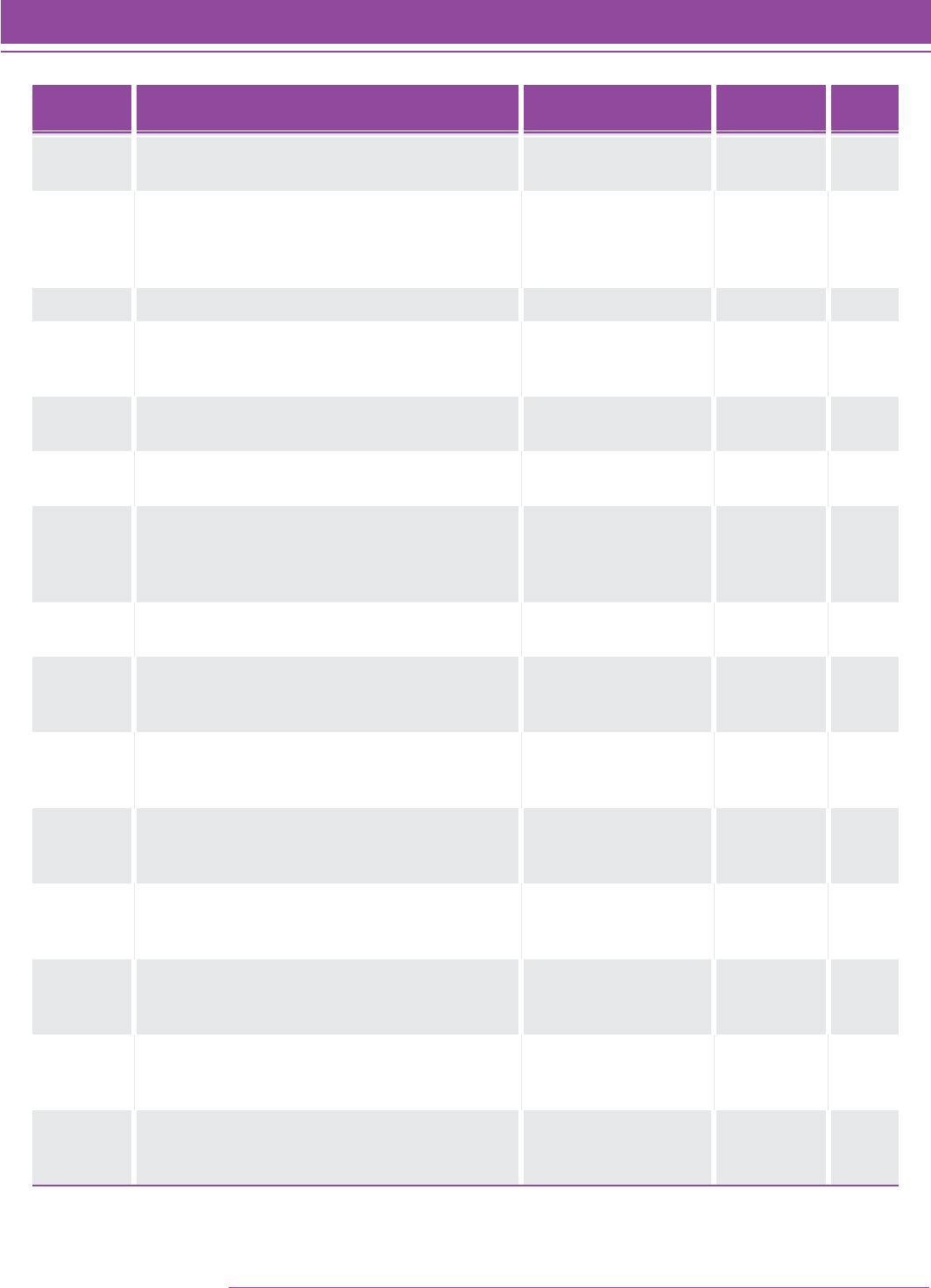

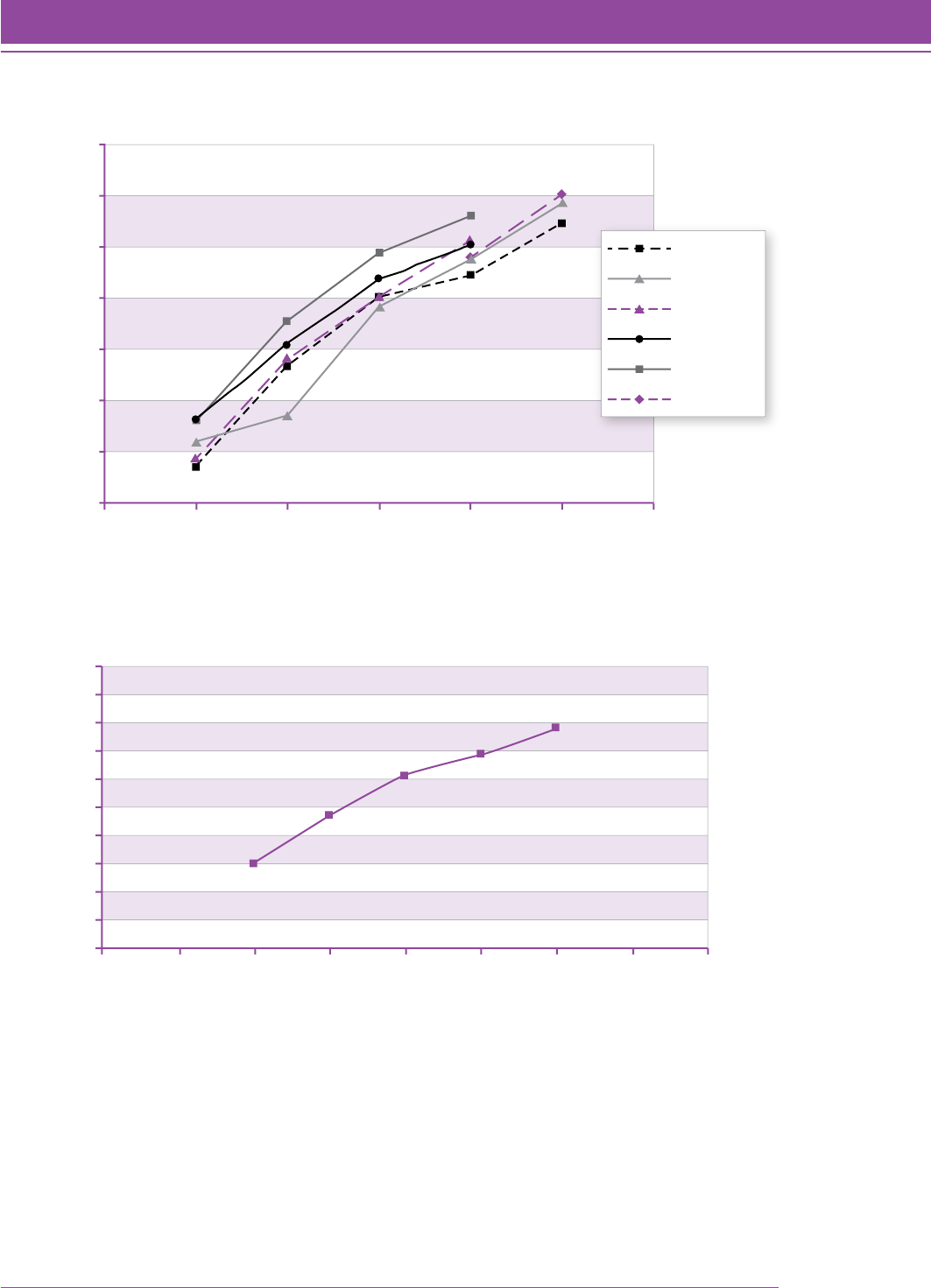

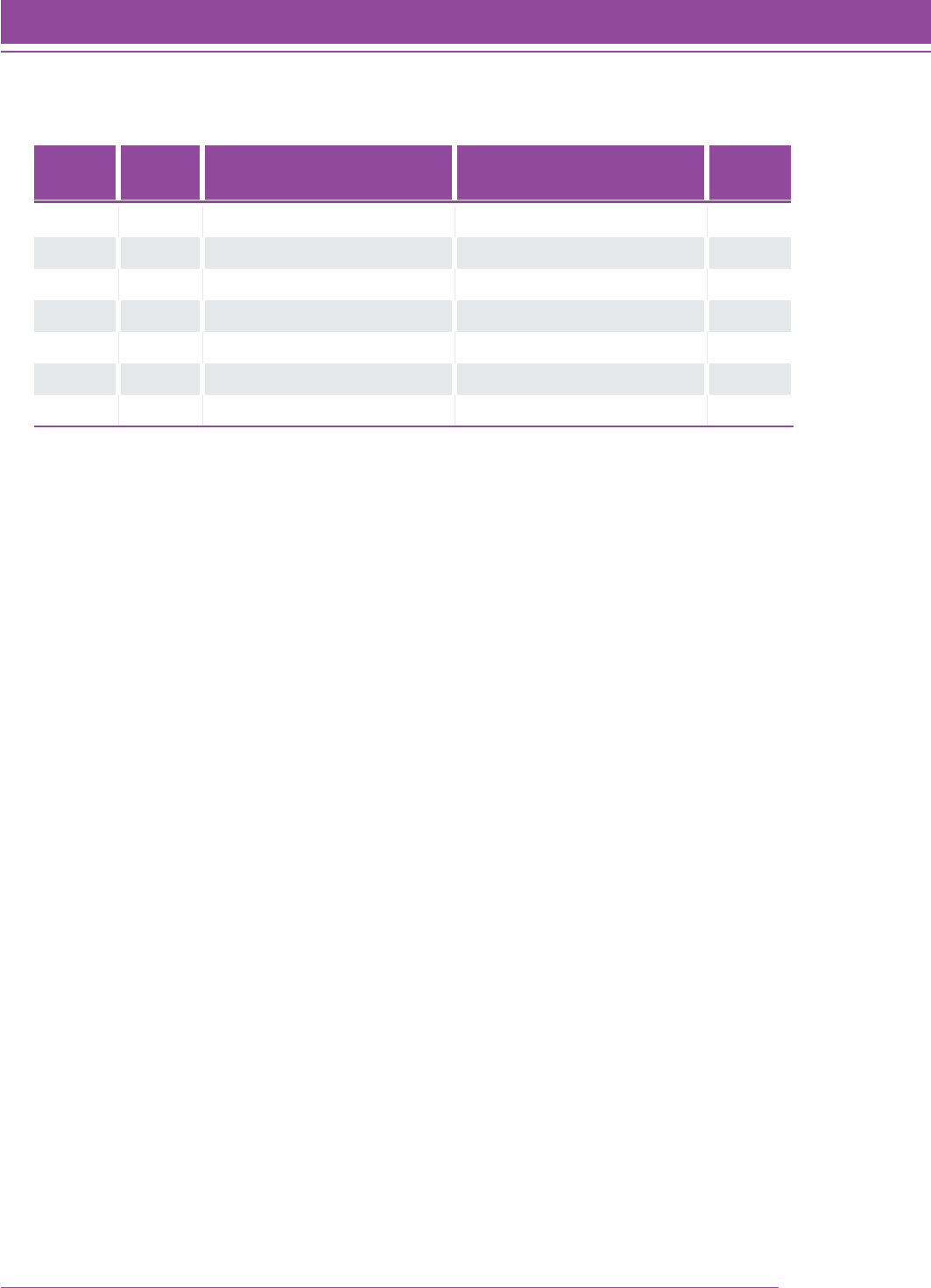

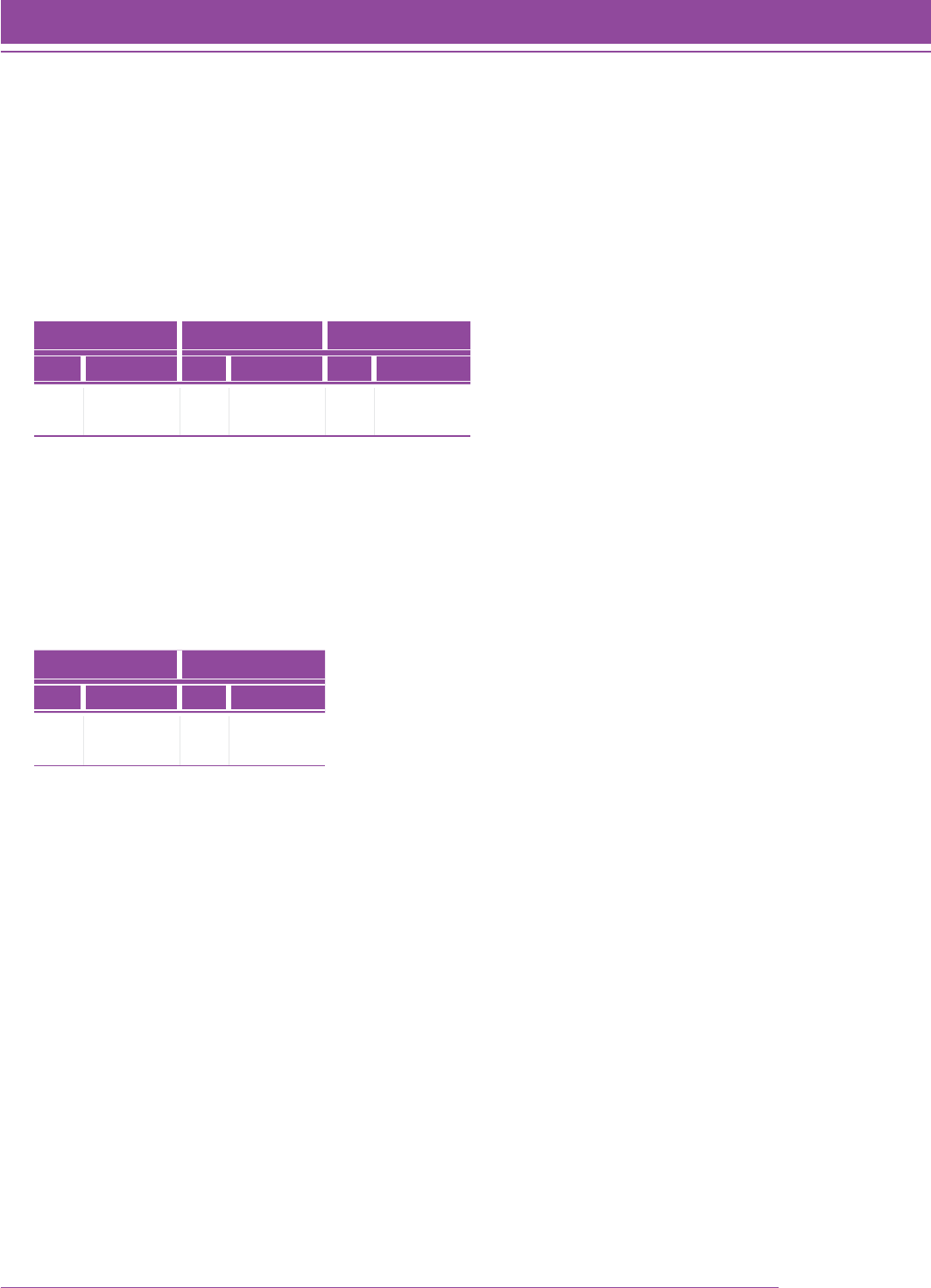

Figure 4 shows the distribution of item difficulties based on the final sample of students. For this analysis, missing

data were treated as skipped items and not counted as wrong. There is a gradual increase in difficulty when items

are sorted by the test level for which they were written. This distribution appears to be nonlinear, which is consistent

with other studies. The correlation between grade level for which the item was written and the Quantile measure of

the item was 0.80.

FIGURE 4. Box and whisker plot of the Rasch ability estimates (using the Quantile scale) of

the 685 Quantile Framework items for the final sample of students (N 5 9,176).

SMI_TG_030

Quantile Measure

2500

2400

2300

2200

2100

0

100

200

300

400

500

600

700

800

900

1000

1100

1200

1300

1400

1500

1600

1700

1800

1900

2000

Grade Level of Item (from item number)

1234567891011

The field testing of the items written for the Quantile Framework indicates a strong correlation between the grade

level of the item and the item difficulty.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation 31

Theoretical Foundation

The Quantile Scale

For development of the Quantile scale, two features needed to be defined:

(1) the scale multiplier (conversion factor from the Rasch model)

(2) the anchor point

Once the scale is defined, it can be used to assign Quantile measures to individual Quantile Skills and Concepts, or

QSCs, as well as clusters of QSCs.

Generating the Quantile Scale

As described in the previous section, the Rasch item response theory model (Wright & Stone, 1979) was used to

estimate the difficulties of items and the abilities of persons on the logit scale. The calibrations of the items from the

Rasch model are objective in the sense that the relative difficulties of the items remain the same across different

samples (specific objectivity). When two items are administered to the same individual, it can be determined which

item is harder and which one is easier. This ordering should hold when the same two items are administered to a

second person.

The problem is that the location of the scale is not known. General objectivity requires that scores obtained from

different test administrations be tied to a common zero; absolute location must be sample independent (Stenner,

1990). To achieve general objectivity instead of simply specific objectivity, the theoretical logit difficulties must be

transformed to a scale where the ambiguity regarding the location of zero is resolved.

The first step in developing the Quantile scale was to determine the conversion factor needed to transform logits

from the Rasch model into Quantile scale units. A vast amount of research has already been conducted on the

relationship between a student’s achievement in reading and the Lexile® scale. Therefore, the decision was made to

examine the relationship between reading and mathematics scales used with other assessments.

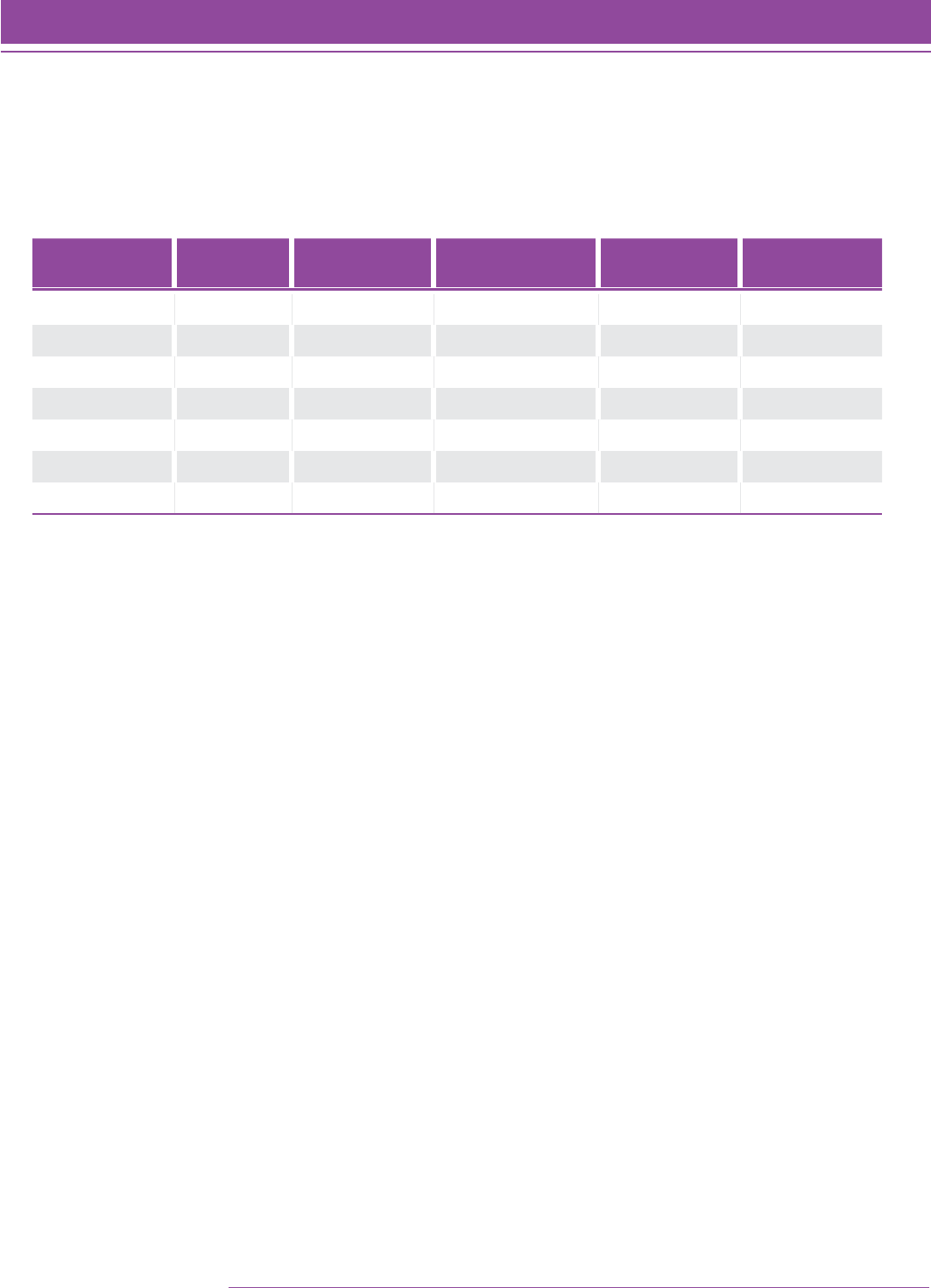

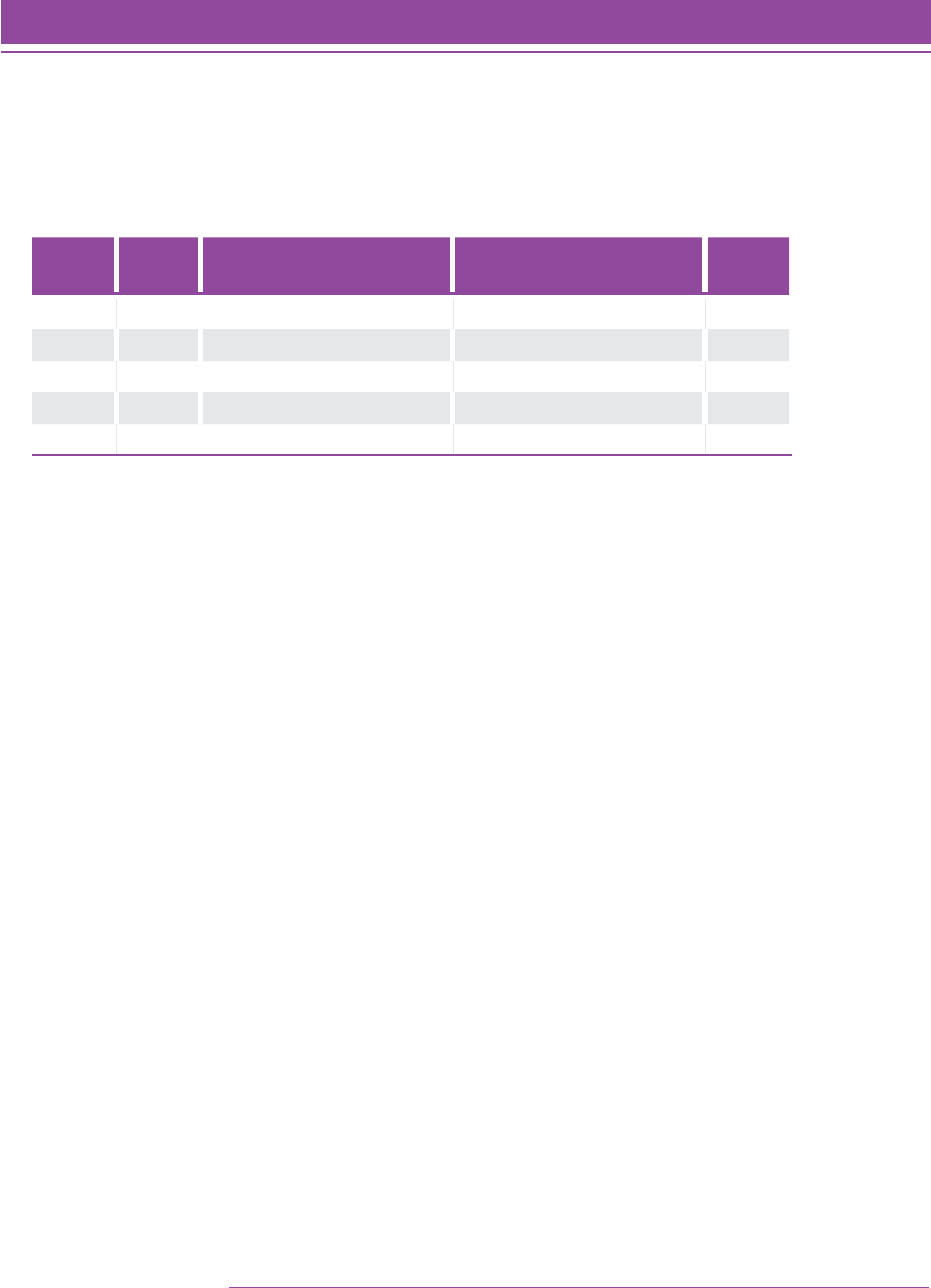

The median scale score for each grade level on a norm-referenced assessment linked with the Lexile scale is

plotted in Figure 5 using the same conversion equation for both reading and mathematics. Based on Figure 5, it was

concluded that the same conversion factor used with the Lexile scale could be used with the Quantile scale.

32 SMI College & Career

Copyright © 2014 by Scholastic Inc. All rights reserved.

Theoretical Foundation

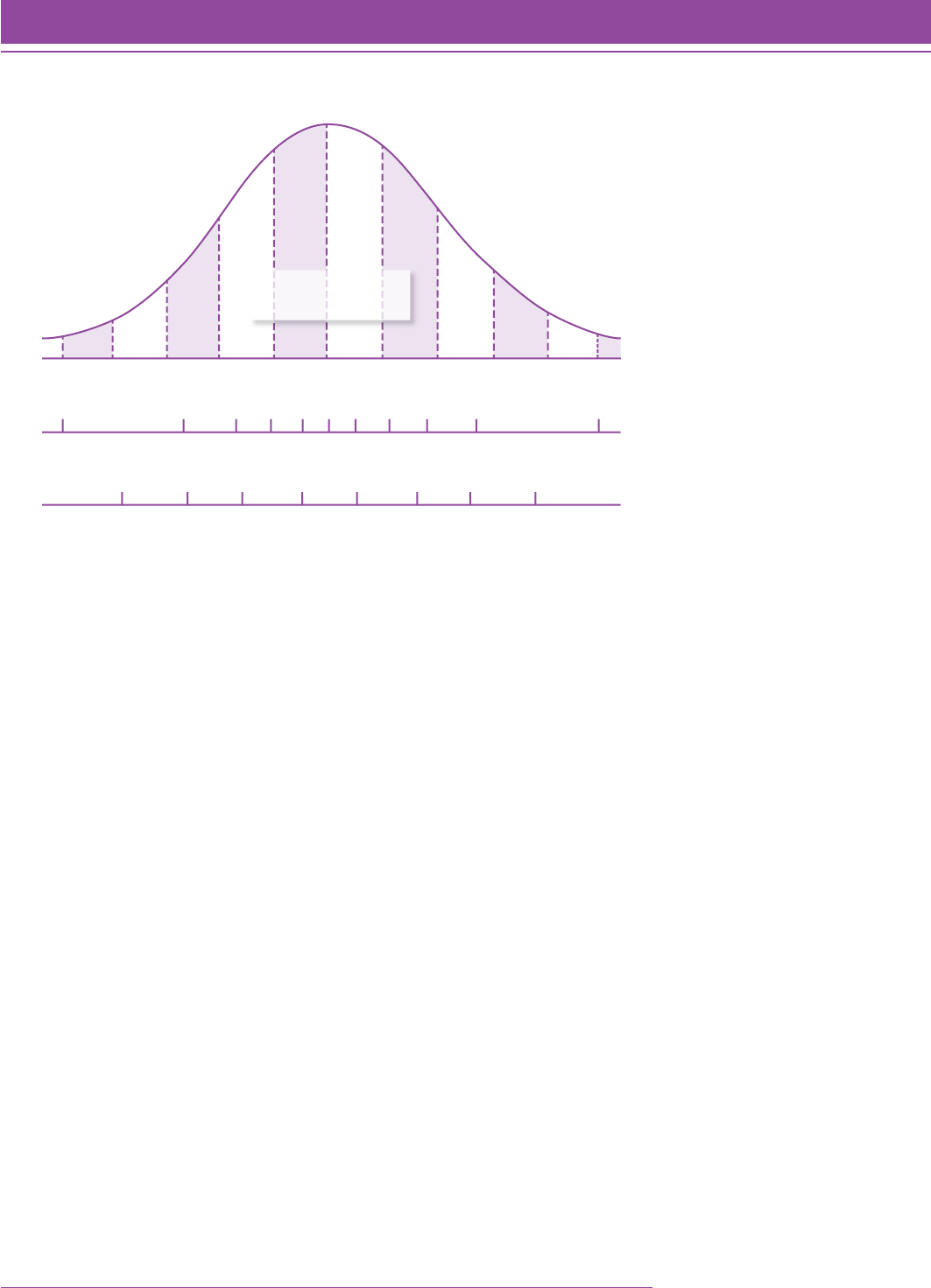

FIGURE 5. Relationship between reading and mathematics scale scores on a norm-

referenced assessment linked to the Lexile scale in reading.

SMI_TG_032

2400

2200

0

200

400

600

800

1000

1200

10 2 3 4 5 6 7 8 9 10 11 12 13

Grade Level

Lexile Scale Calibration

Reading Math

The second step in developing a Quantile scale with a fixed zero was to identify an anchor point for the scale. Given