Architecting Microsoft SQL Server On VMware VSphere Best Practices Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 53

Architecting Microsoft SQL Server

on VMware vSphere®

B E ST PR ACTIC ES G U I D E

M A R C H 2 0 1 7

B E S T P R A C T I C E S G U I D E / P AG E 2 OF 53

Architecting Microsoft SQL Server on VMware vSphere

Table of Contents

1. Introduction ........................................................................................................................................... 5

1.1 Purpose ........................................................................................................................................ 5

1.2 Target Audience .......................................................................................................................... 6

2. Application Requirements Considerations ........................................................................................... 7

2.1 Understand the Application Workload ......................................................................................... 7

2.2 Availability and Recovery Options ............................................................................................... 8

2.2.1 VMware Business Continuity Options ..................................................................................... 8

2.2.2 Native SQL Server Capabilities ............................................................................................. 11

3. Best Practices for Deploying SQL Server Using vSphere .................................................................. 12

3.1 Rightsizing ................................................................................................................................. 12

3.2 Host Configuration ..................................................................................................................... 13

3.2.1 BIOS/UEFI and Firmware Versions....................................................................................... 13

3.2.2 BIOS/UEFI Settings ............................................................................................................... 13

3.2.3 Power Management .............................................................................................................. 13

3.3 CPU Configuration ..................................................................................................................... 16

3.3.1 Physical, Virtual, and Logical CPUs and Cores .................................................................... 16

3.3.2 Allocating vCPU to SQL Server Virtual Machines ................................................................. 17

3.3.3 Hyper-threading ..................................................................................................................... 17

3.3.4 NUMA Consideration ............................................................................................................. 18

3.3.5 Cores per Socket ................................................................................................................... 19

3.3.6 CPU Hot Plug ........................................................................................................................ 21

3.3.7 CPU Affinity ........................................................................................................................... 21

3.3.8 Virtual Machine Encryption .................................................................................................... 21

3.4 Memory Configuration ............................................................................................................... 22

3.4.1 Memory Sizing Considerations .............................................................................................. 23

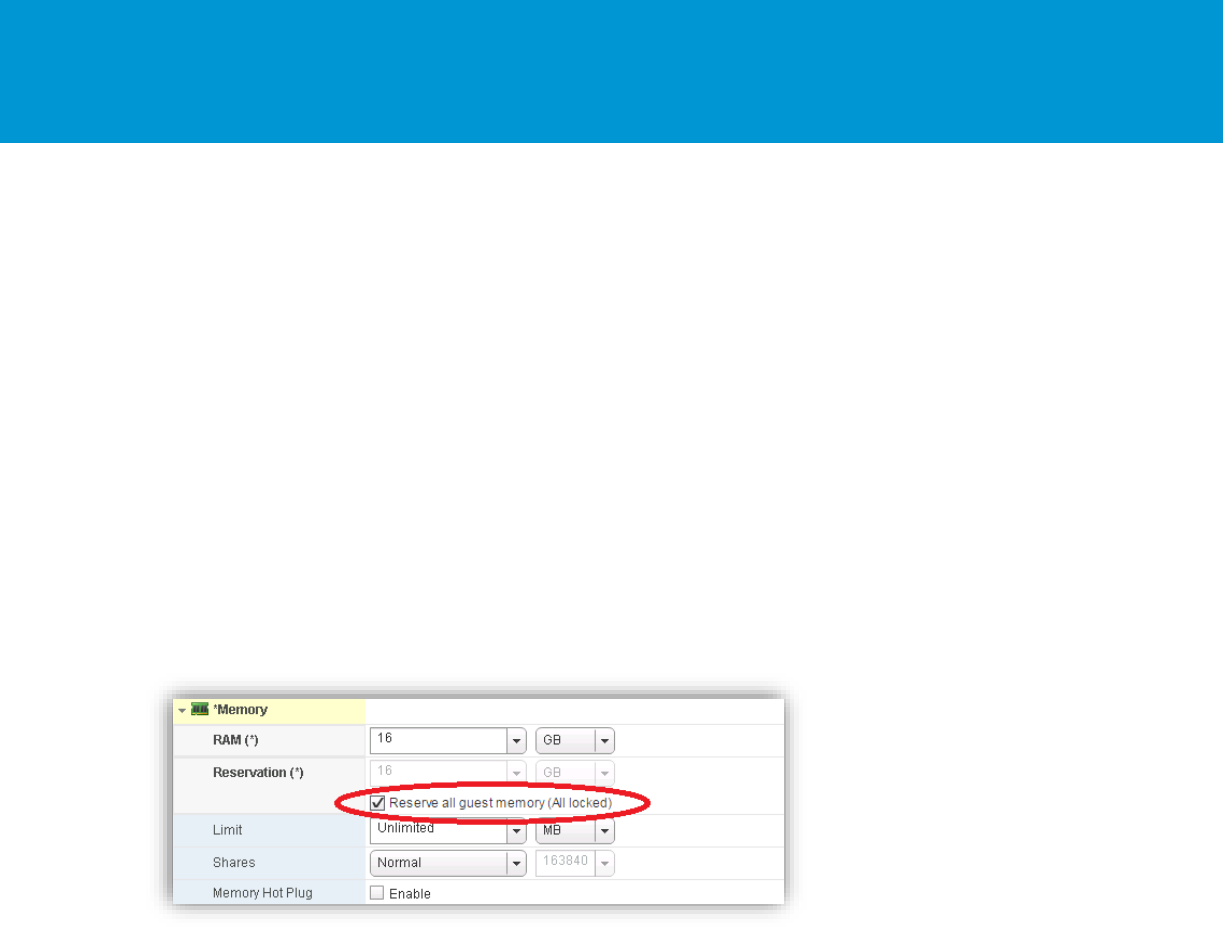

3.4.2 Memory Reservation ............................................................................................................. 24

3.4.3 The Balloon Driver ................................................................................................................. 24

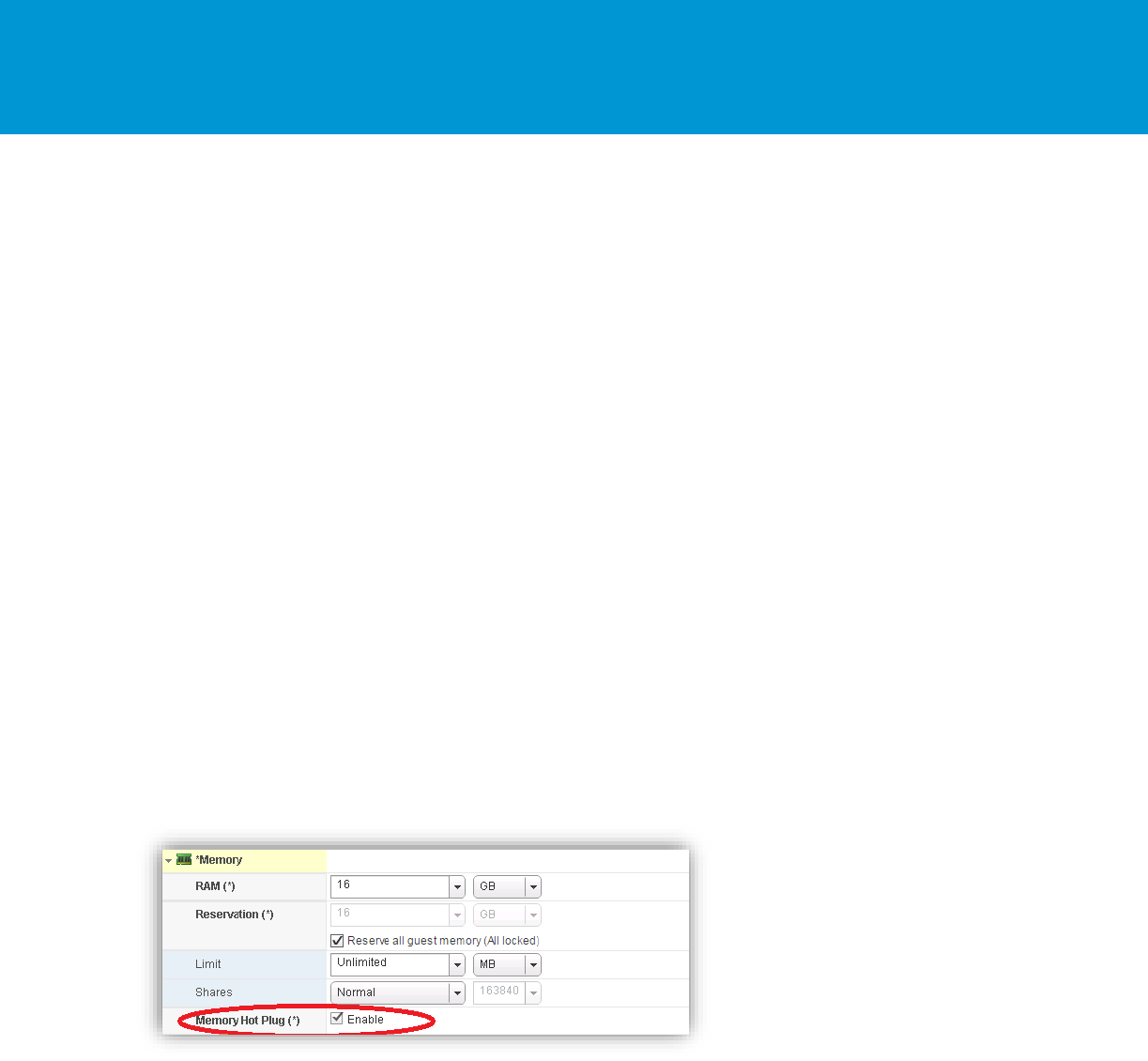

3.4.4 Memory Hot Plug ................................................................................................................... 25

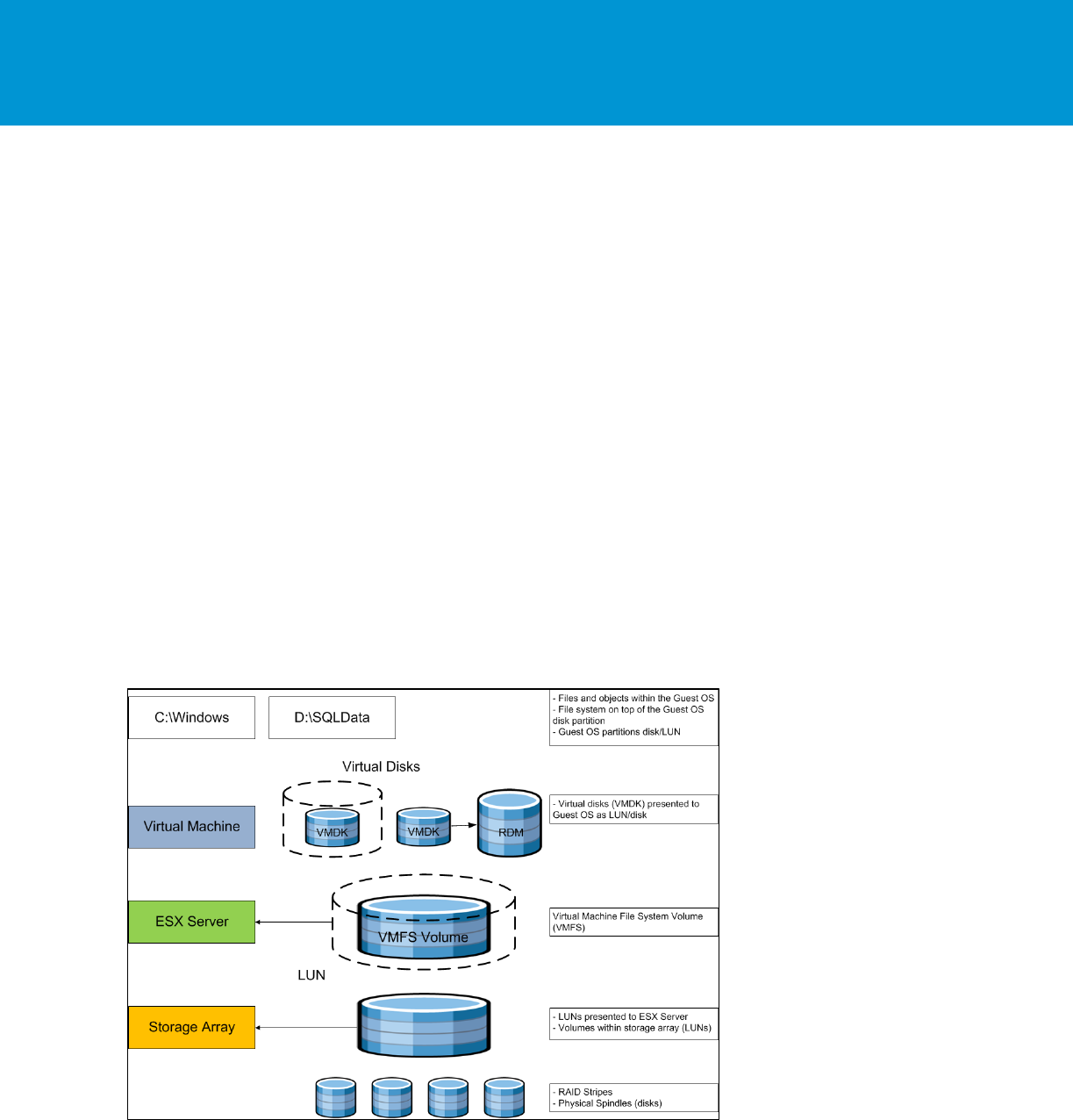

3.5 Storage Configuration ................................................................................................................ 25

3.5.1 vSphere Storage Options ...................................................................................................... 26

3.5.2 Allocating Storage ................................................................................................................. 35

3.5.3 Considerations for Using All-Flash Arrays ............................................................................ 37

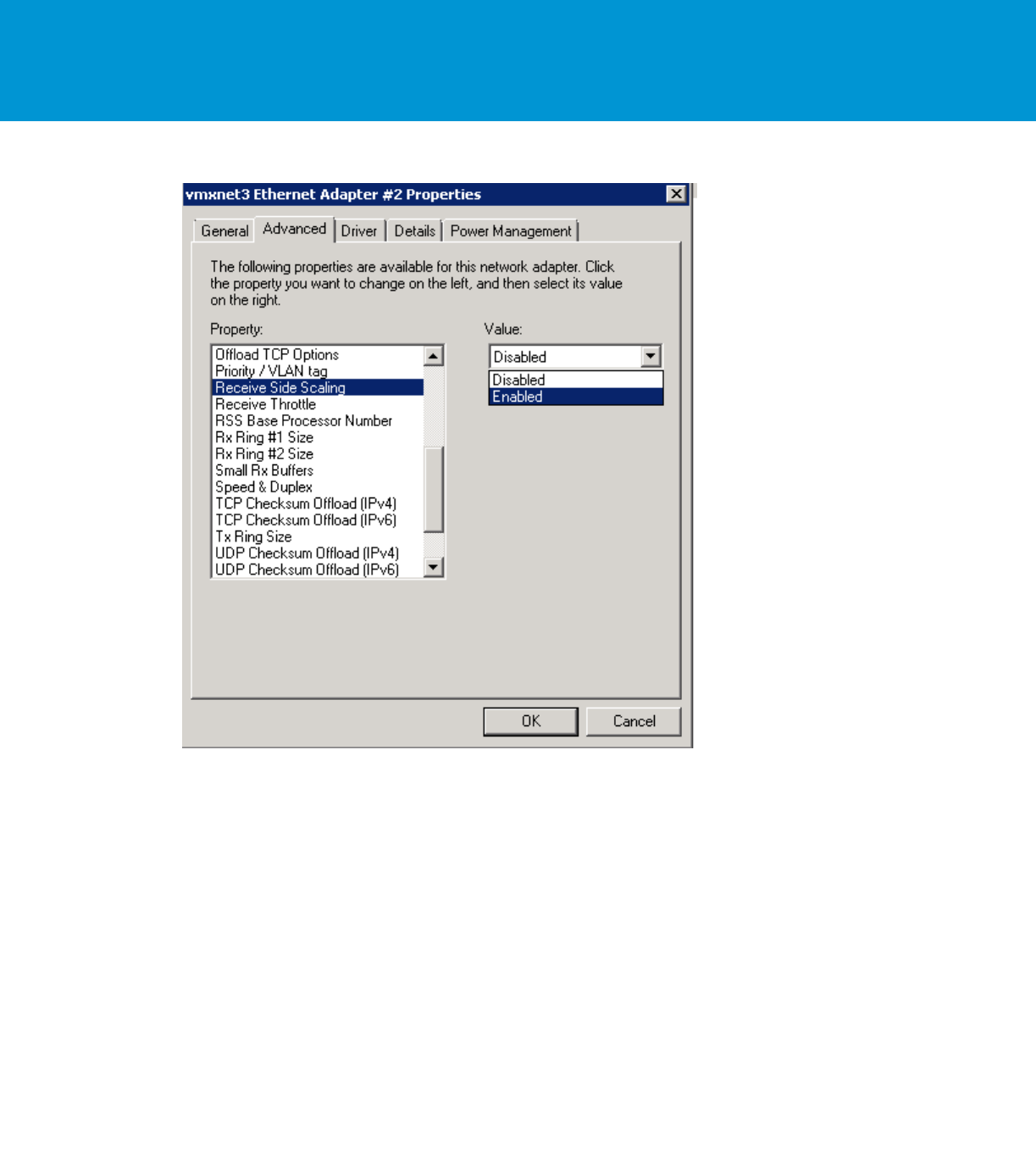

3.6 Network Configuration ............................................................................................................... 39

3.7 Virtual Network Concepts .......................................................................................................... 40

3.7.1 Virtual Networking Best Practices ......................................................................................... 41

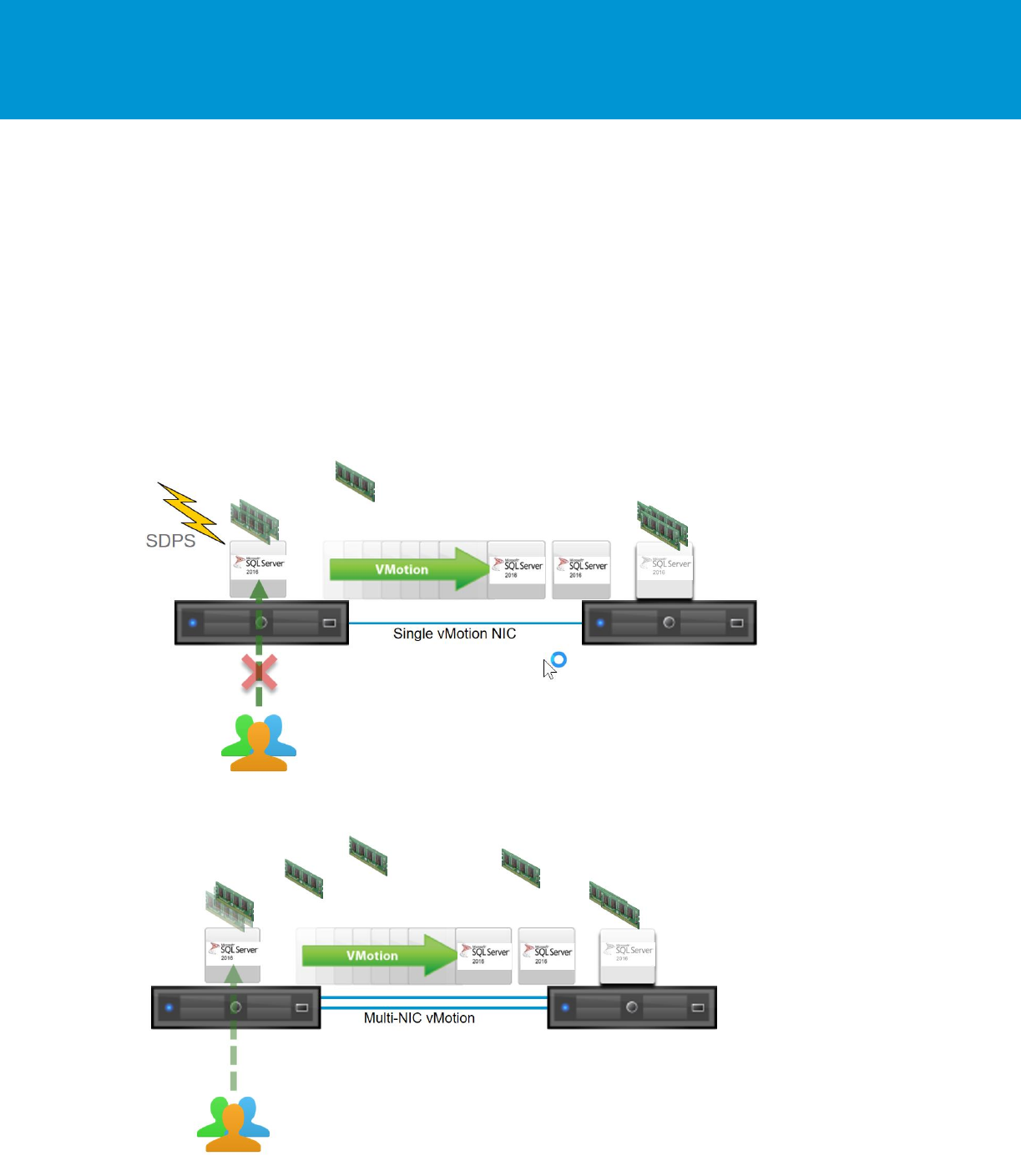

3.7.2 Using multinic vMotion for High Memory Workloads ............................................................. 41

B E S T P R A C T I C E S G U I D E / P AG E 3 OF 53

Architecting Microsoft SQL Server on VMware vSphere

3.6.4. Enable Jumbo Frames for vSphere vMotion Interfaces ........................................................... 43

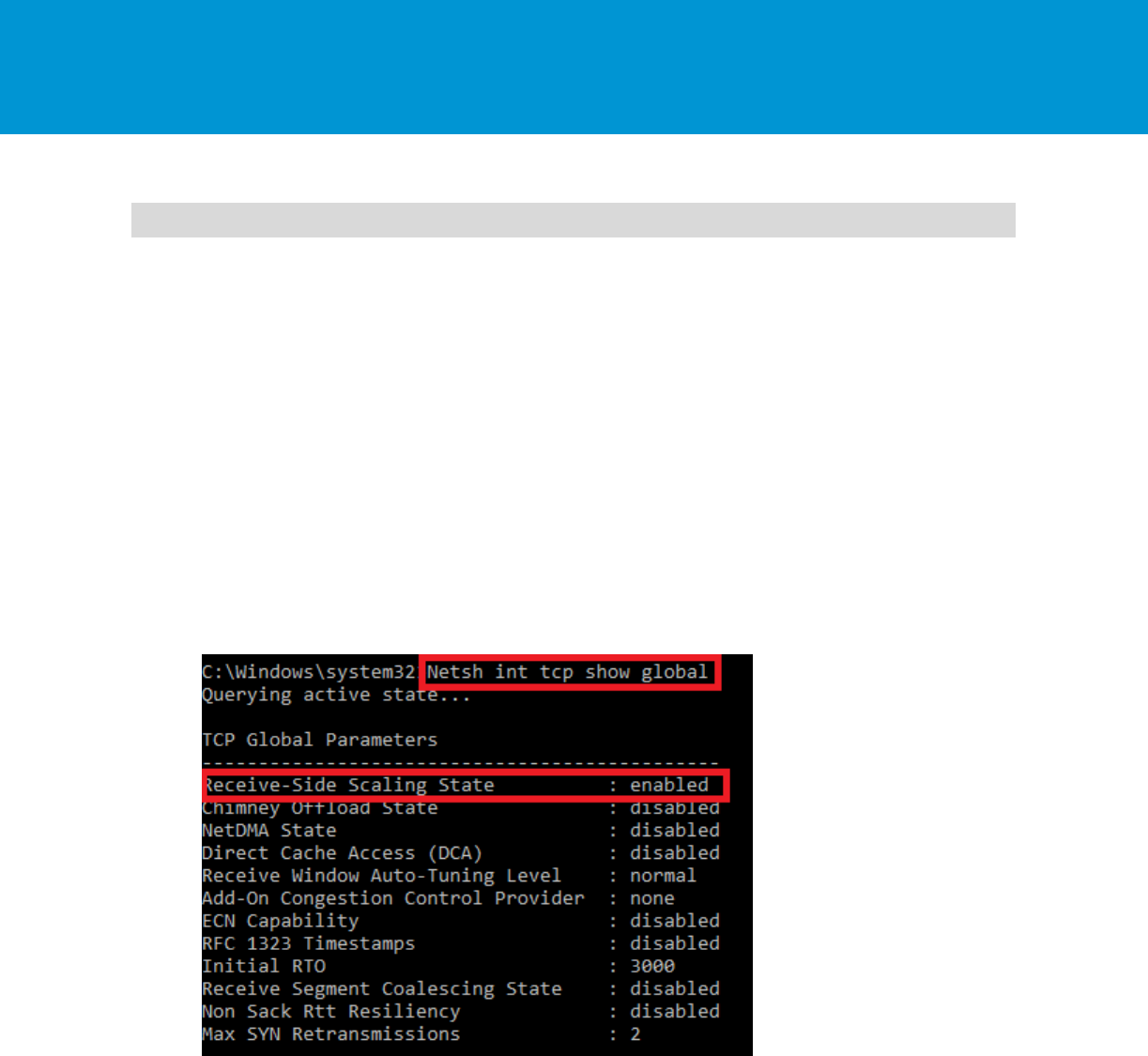

4. SQL Server and In-Guest Best Practices ........................................................................................... 44

4.1 Windows Server Configuration .................................................................................................. 44

4.2 Maximum Server Memory and Minimum Server Memory ......................................................... 46

4.3 Lock Pages in Memory .............................................................................................................. 46

4.4 Large Pages .............................................................................................................................. 47

4.5 CXPACKET, MAXDOP, and CTFP ........................................................................................... 48

4.6 Using Antivirus ........................................................................................................................... 48

5. VMware Enhancements for Deployment and Operations .................................................................. 49

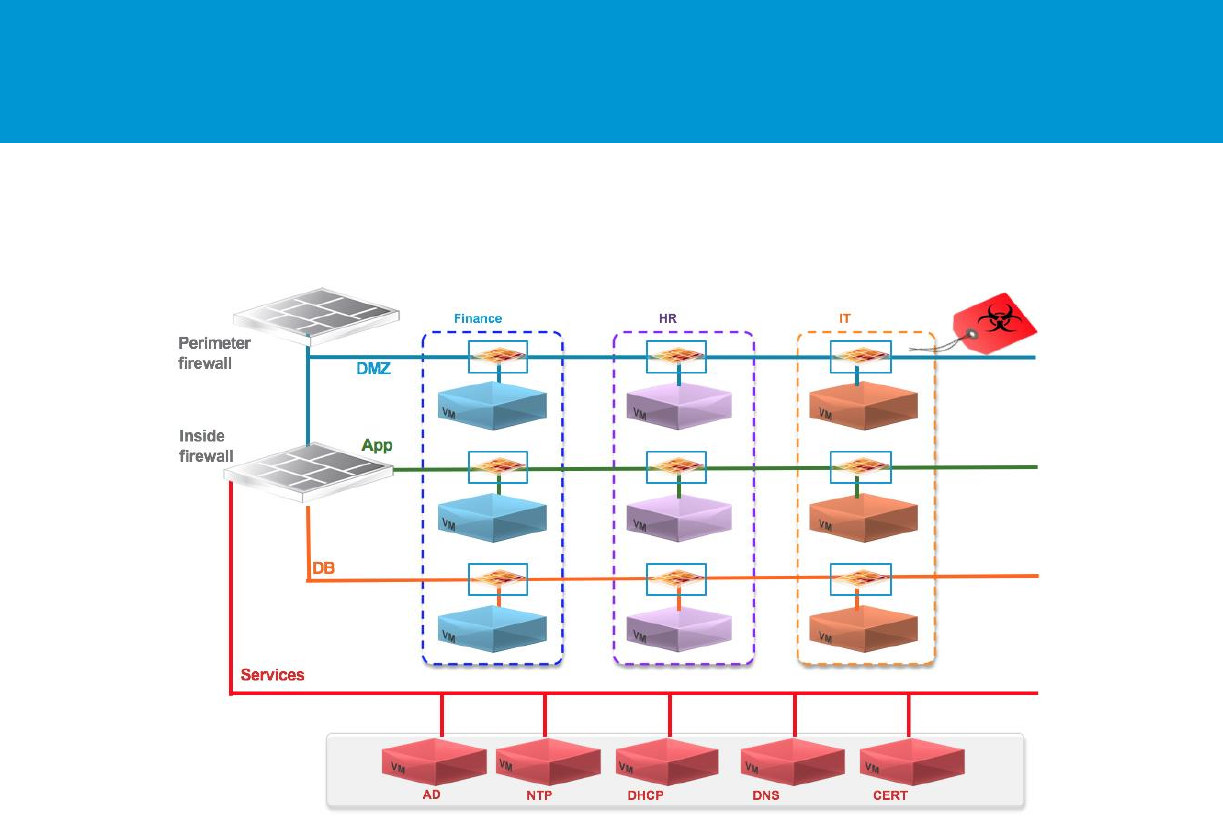

5.1 Network Virtualization with VMware NSX for vSphere .............................................................. 49

5.1.1 NSX Edge Load balancing .................................................................................................... 49

5.1.2 VMware NSX Distributed Firewall ......................................................................................... 50

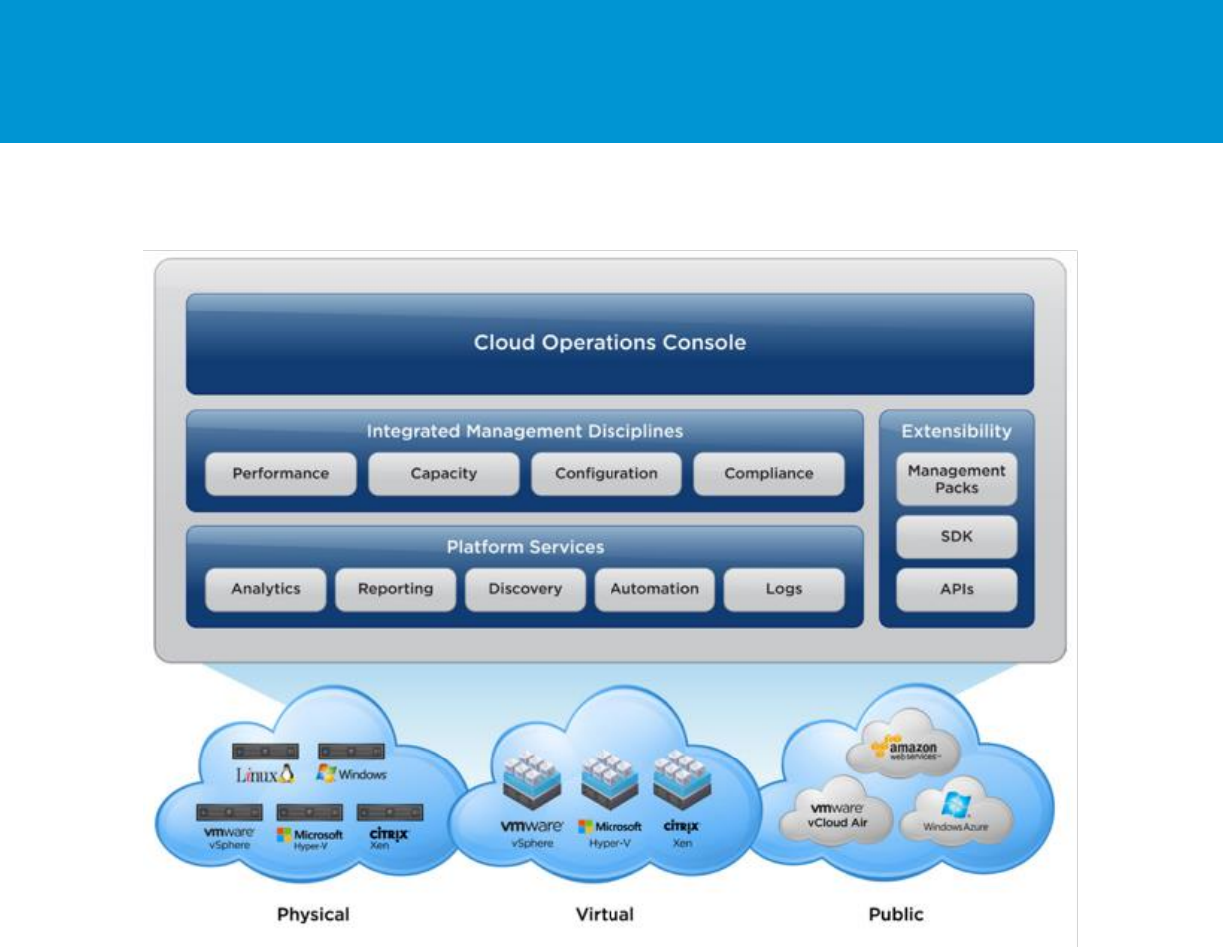

5.2 VMware vRealize Operations Manager ..................................................................................... 51

6. Acknowledgments .............................................................................................................................. 53

B E S T P R A C T I C E S G U I D E / P AG E 4 OF 53

Architecting Microsoft SQL Server on VMware vSphere

List of Figures

Figure 1. vSphere HA 9

Figure 2. vSphere FT 9

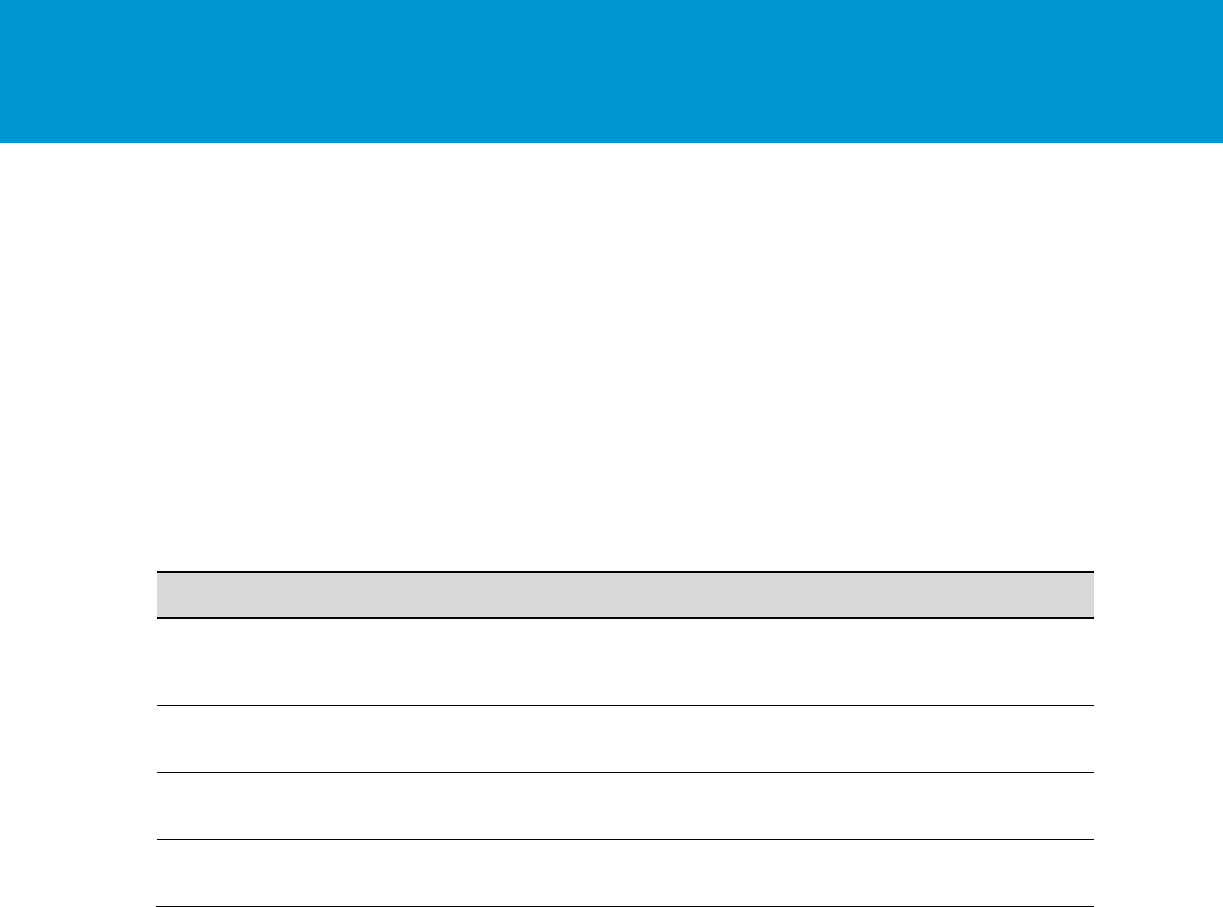

Figure 3. Recommended ESXi Host Power Management Setting 15

Figure 4. Windows Server CPU Core Parking 16

Figure 5. Recommended Windows Guest Power Scheme 16

Figure 6. An Example of a VM with NUMA Locality 18

Figure 7. An Example of a VM with vNUMA 19

Figure 8. Cores per Sockets 19

Figure 9. Enabling CPU Hot Plug 21

Figure 10. Memory Mappings Between Virtual, Guest, and Physical Memory 22

Figure 11. Sample Overhead Memory on Virtual Machines 23

Figure 12. Setting Memory Reservation 24

Figure 13. Setting Memory Hot Plug 25

Figure 14. VMware Storage Virtualization Stack 26

Figure 15. vSphere Virtual Volumes 28

Figure 16. vSphere Virtual Volumes High Level Architecture 29

Figure 17. VMware vSAN 30

Figure 18. vSAN Stretched Cluster 31

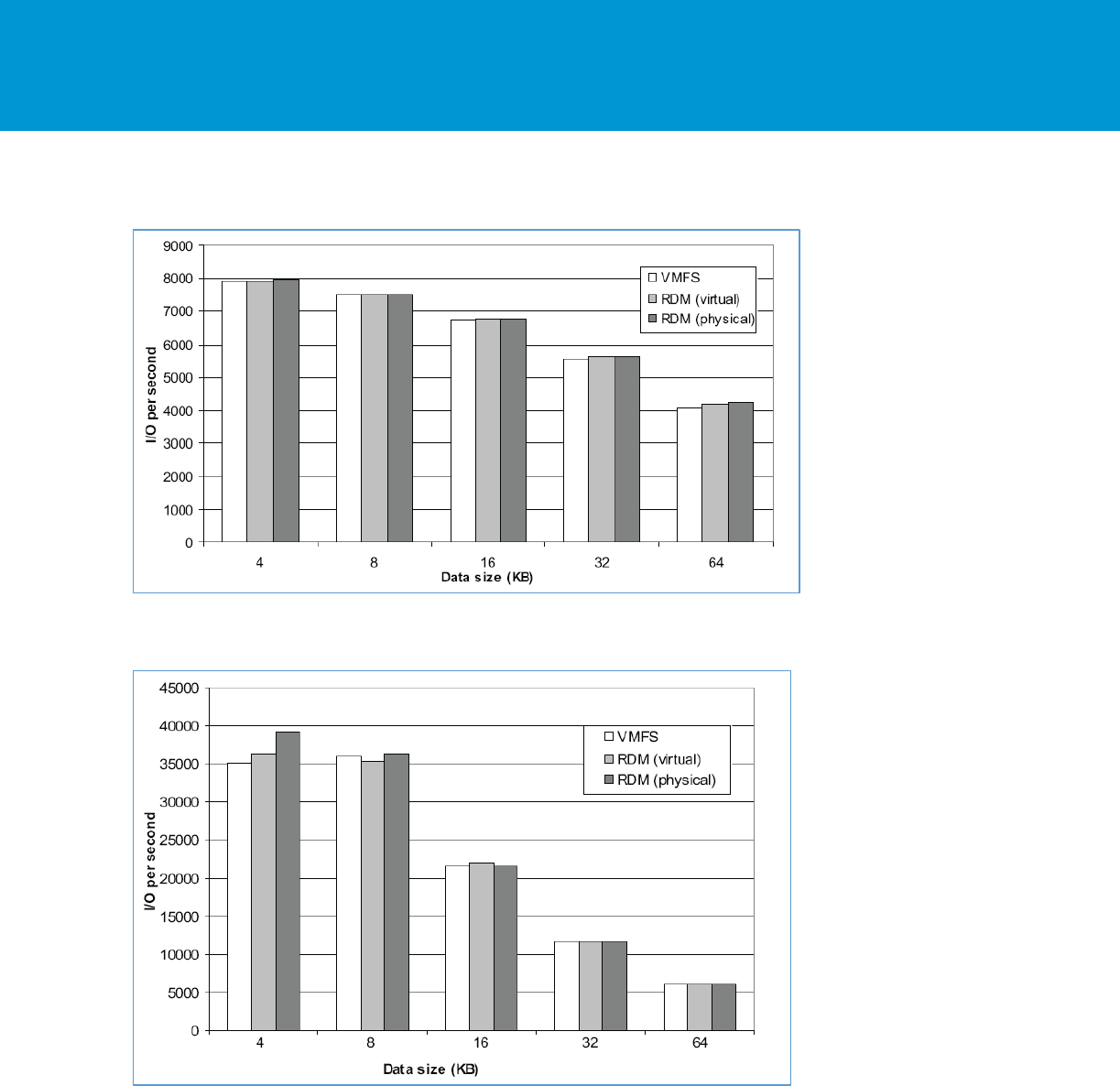

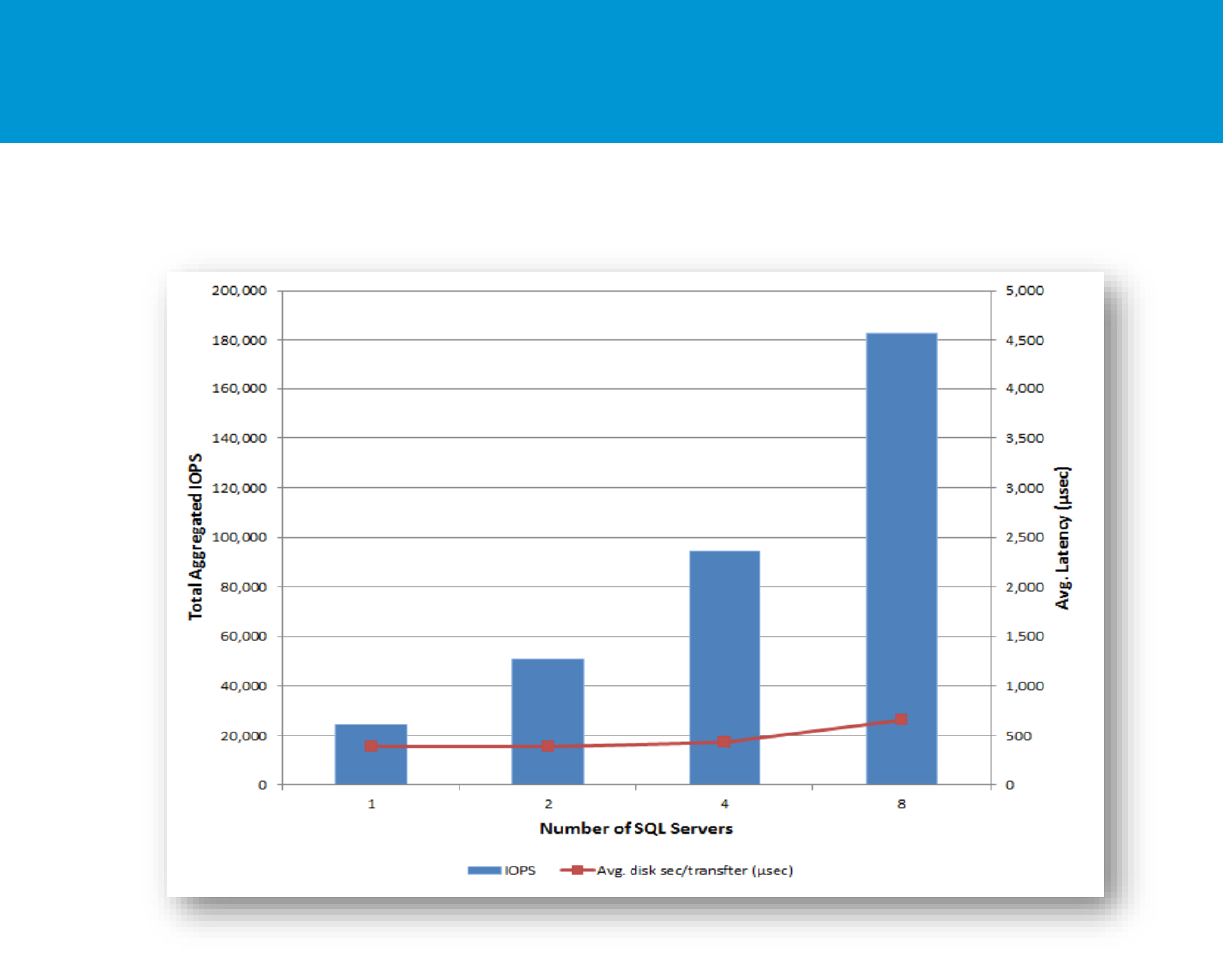

Figure 19. Random Mixed (50% Read/50% Write) I/O Operations per Second (Higher is Better) 34

Figure 20. Sequential Read I/O Operations per Second (Higher is Better) 34

Figure 21. XtremIO Performance with Consolidated SQL Server 38

Figure 22. Virtual Networking Concepts 40

Figure 23. NSX Distributed Firewall Capability 51

Figure 24. vRealize Operations 52

B E S T P R A C T I C E S G U I D E / P AG E 5 OF 53

Architecting Microsoft SQL Server on VMware vSphere

1. Introduction

Microsoft SQL Server is one of the most widely deployed database platforms in the world, with many

organizations having dozens or even hundreds of instances deployed in their environments. The flexibility

of SQL Server, with its rich application capabilities combined with the low costs of x86 computing, has led

to a wide variety of SQL Server installations ranging from large data warehouses to small, highly

specialized departmental and application databases. The flexibility at the database layer translates

directly into application flexibility, giving end users more useful application features and ultimately

improving productivity.

Application flexibility often comes at a cost to operations. As the number of applications in the enterprise

continues to grow, an increasing number of SQL Server installations are brought under lifecycle

management. Each application has its own set of requirements for the database layer, resulting in

multiple versions, patch levels, and maintenance processes. For this reason, many application owners

insist on having a SQL Server installation dedicated to an application. As application workloads vary

greatly, many SQL Server installations are allocated more hardware resources than they need, while

others are starved for compute resources.

These challenges have been recognized by many organizations in recent years. These organizations are

now virtualizing their most critical applications and embracing a “virtualization first” policy. This means

applications are deployed on virtual machines (VMs) by default rather than on physical servers, and

Microsoft SQL Server is the most virtualized critical application in the past few years.

Virtualizing Microsoft SQL Server with VMware vSphere® allows for the best of both worlds,

simultaneously optimizing compute resources through server consolidation and maintaining application

flexibility through role isolation, taking advantage of the SDDC (software-defined data center) and

capabilities such as network and storage virtualization. Microsoft SQL Server workloads can be migrated

to new sets of hardware in their current states without expensive and error-prone application remediation,

and without changing operating system or application versions or patch levels. For high performance

databases, VMware and partners have demonstrated the capabilities of vSphere to run the most

challenging Microsoft SQL Server workloads.

Virtualizing Microsoft SQL Server with vSphere enables many additional benefits. For example, VMware

vSphere vMotion®, which enables seamless migration of virtual machines containing Microsoft SQL

Server instances between physical servers and between data centers without interrupting users or their

applications. VMware vSphere Distributed Resource Scheduler™ (DRS) can be used to dynamically

balance Microsoft SQL Server workloads between physical servers. VMware vSphere High Availability

(HA) and VMware vSphere Fault Tolerance (FT) provide simple and reliable protection for SQL Server

virtual machines and can be used in conjunction with SQL Server’s own HA capabilities. Among other

features, VMware NSX® provides network virtualization and dynamic security policy enforcement.

VMware Site Recovery Manager™ provides disaster recovery plan orchestration. There are many more

benefits that VMware can provide for the benefit of virtualized applications.

For many organizations, the question is no longer whether to virtualize SQL Server, rather, it is to

determine the best virtualization strategy to achieve the business requirements while keeping operational

overhead to a minimum for cost effectiveness.

1.1 Purpose

This document provides best practice guidelines for designing Microsoft SQL Server virtual machines to

run on vSphere. The recommendations are not specific to a particular hardware set, or to the size and

scope of a particular SQL Server implementation. The examples and considerations in this document

provide guidance only, and do not represent strict design requirements, as varying application

requirements might result in many valid configuration possibilities.

B E S T P R A C T I C E S G U I D E / P AG E 6 OF 53

Architecting Microsoft SQL Server on VMware vSphere

1.2 Target Audience

This document assumes a basic knowledge and understanding of VMware vSphere and Microsoft SQL

Server.

Architectural staff can use this document to gain an understanding of how the system will work as a whole

as they design and implement various components.

Engineers and administrators can use this document as a catalog of technical capabilities.

DBA staff can use this document to gain an understanding of how SQL server might fit into a virtual

infrastructure.

Management staff and process owners can use this document to help model business processes to take

advantage of the savings and operational efficiencies achieved with virtualization.

B E S T P R A C T I C E S G U I D E / P AG E 7 OF 53

Architecting Microsoft SQL Server on VMware vSphere

2. Application Requirements Considerations

When considering SQL Server deployments as candidates for virtualization, you need a clear

understanding of the business and technical requirements for each instance. These requirements span

multiple dimensions, such as availability, performance, scalability, growth and headroom, patching, and

backups.

Use the following high-level procedure to simplify the process for characterizing SQL Server candidates

for virtualization:

1. Understand the characteristics of the database workload associated with the application accessing

SQL Server.

2. Understand availability and recovery requirements, including uptime guarantees (“number of nines”)

and disaster recovery.

3. Capture resource utilization baselines for existing physical databases.

4. Plan the migration/deployment to vSphere.

2.1 Understand the Application Workload

The SQL Server is a relational database management system (RDBMS) that runs workloads from

applications. A single installation, or instance, of SQL Server running on Windows Server (or soon, Linux)

can have one or more user databases. Data is stored and accessed through the user databases. The

workloads that run against these databases can have different characteristics that influence deployment

and other factors, such as feature usage or the availability architecture. These factors influence

characteristics like how virtual machines are laid out on VMware ESXi™ hosts, as well as the underlying

disk configuration.

Before deploying SQL Server instances inside a VM on vSphere, you must understand the business

requirements and the application workload for the SQL Server deployments you intend to support. Each

application has different requirements for capacity, performance, and availability. Consequently, each

deployment must be designed to optimally support those requirements. Many organizations classify SQL

Server installations into multiple management tiers based on service level agreements (SLAs), recovery

point objectives (RPOs), and recovery time objectives (RTOs). The classification of the type of workload a

SQL Server runs often dictates the architecture and resources allocated to it. The following are some

common examples of workload types. Mixing workload types in a single instance of SQL Server is not

recommended.

OLTP databases (online transaction processing) are often the most critical databases in an

organization. These databases usually back customer-facing applications and are considered

essential to the company’s core operations. Mission-critical OLTP databases and the applications

they support often have SLAs that require very high levels of performance and are very sensitive for

performance degradation and availability. SQL Server VMs running OLTP mission critical databases

might require more careful resource allocation (CPU, memory, disk, and network) to achieve optimal

performance. They might also be candidates for clustering with Windows Server Failover Cluster,

which run either an AlwaysOn Failover Cluster Instance (FCI) or AlwaysOn Availability Group (AG).

These types of databases are usually characterized with mostly intensive random writes to disk and

sustained CPU utilization during working hours.

DSS (decision support systems) databases, can be also referred to as data warehouses. These are

mission critical in many organizations that rely on analytics for their business. These databases are

very sensitive to CPU utilization and read operations from disk when queries are being run. In many

organizations, DSS databases are the most critical during month/quarter/year end.

B E S T P R A C T I C E S G U I D E / P AG E 8 OF 53

Architecting Microsoft SQL Server on VMware vSphere

Batch, reporting services, and ETL databases are busy only during specific periods for such tasks as

reporting, batch jobs, and application integration or ETL workloads. These databases and

applications might be essential to your company’s operations, but they have much less stringent

requirements for performance and availability. They may, nonetheless, have other very stringent

business requirements, such as data validation and audit trails.

Other smaller, lightly used databases typically support departmental applications that may not

adversely affect your company’s real-time operations if there is an outage. Many times, you can

tolerate such databases and applications being down for extended periods.

Resource needs for SQL Server deployments are defined in terms of CPU, memory, disk and network

I/O, user connections, transaction throughput, query execution efficiency/latencies, and database size.

Some customers have established targets for system utilization on hosts running SQL Server, for

example, 80 percent CPU utilization, leaving enough headroom for any usage spikes and/or availability.

Understanding database workloads and how to allocate resources to meet service levels helps you to

define appropriate virtual machine configurations for individual SQL Server databases. Because you can

consolidate multiple workloads on a single vSphere host, this characterization also helps you to design a

vSphere and storage hardware configuration that provides the resources you need to deploy multiple

workloads successfully on vSphere.

2.2 Availability and Recovery Options

Running Microsoft SQL Server on vSphere offers many options for database availability, backup and

disaster recovery utilizing the best features from both VMware and Microsoft. This section covers the

different options that exist for availability and recovery.

2.2.1 VMware Business Continuity Options

VMware technologies, such as vSphere HA, vSphere Fault Tolerance, vSphere vMotion, VMware

vSphere Storage vMotion®, and VMware Site Recovery Manager™ can be used in a business continuity

design to protect SQL Server instances from planned and unplanned downtime. These technologies also

protect SQL Server instances from failure of a hardware component, to a full site failure, and in

conjunction with native SQL Server business continuity capabilities, increase availability.

2.2.1.1. VMware vSphere High Availability

vSphere HA provides easy to use, cost-effective high availability for applications running in virtual

machines. vSphere HA leverages multiple ESX hosts configured as a cluster to provide rapid recovery

from outages and cost-effective high availability for applications running in virtual machines. vSphere HA

protects application availability in the following ways:

Protects against a physical server failure by restarting the virtual machines on other hosts within the

cluster in case of a failure.

Protects against operating system failure by continuous monitoring using a heartbeat and by

restarting the virtual machine in case of a failure.

Detects the hardware conditions of a host proactively and enables evacuation of the virtual machines

before an issue causes an outage.

Protects against datastore accessibility failures by restarting affected virtual machines on other hosts

that still have access to their datastores.

Protects virtual machines against network isolation by restarting them if their host becomes isolated

on the management network. This protection is provided even if the network has become partitioned.

B E S T P R A C T I C E S G U I D E / P AG E 9 OF 53

Architecting Microsoft SQL Server on VMware vSphere

Provides APIs for protecting against application failure allowing third-party tools to continuously

monitor an application and reset the virtual machine if a failure is detected.

Figure 1. vSphere HA

2.2.1.2. vSphere Fault Tolerance

vSphere Fault Tolerance provides a higher level of availability, allowing users to protect any virtual

machine from a physical host failure with no loss of data, transactions, or connections. vSphere FT

provides continuous availability by verifying that the states of the primary and secondary VMs are

identical at any point in the instruction execution of the virtual machine. If either the host running the

primary VM or the host running the secondary VM fails, an immediate and transparent failover occurs.

The functioning ESXi host seamlessly becomes the primary VM host without losing network connections

or in-progress transactions. With transparent failover, there is no data loss, and network connections are

maintained. After a transparent failover occurs, a new secondary VM is respawned and redundancy is re-

established. The entire process is transparent, fully automated, and occurs even if VMware vCenter

Server™ is unavailable.

There are licensing requirements and interoperability limitations to consider when using fault tolerance as

detailed in the VMware vSphere Availability guide at https://pubs.vmware.com/vsphere-

60/topic/com.vmware.ICbase/PDF/vsphere-esxi-vcenter-server-60-availability-guide.pdf:

Figure 2. vSphere FT

B E S T P R A C T I C E S G U I D E / P A G E 10 O F 53

Architecting Microsoft SQL Server on VMware vSphere

2.2.1.3. vSphere vMotion and vSphere Storage vMotion

Planned downtime typically accounts for more than 80 percent of data center downtime. Hardware

maintenance, server migration, and firmware updates all require downtime for physical servers and

storage systems. To minimize the impact of this downtime, organizations are forced to delay maintenance

until inconvenient and difficult-to-schedule downtime windows.

The vSphere vMotion and vSphere Storage vMotion functionality in vSphere makes it possible for

organizations to reduce planned downtime because workloads in a VMware environment can be

dynamically moved to different physical servers or to different underlying storage without any service

interruption. Administrators can perform faster and completely transparent maintenance operations,

without being forced to schedule inconvenient maintenance windows.

vSphere 6 introduced three new vSphere vMotion capabilities:

Cross vCenter vSphere vMotion – Allows for live migration of virtual machines between vCenter

instances without any service interruption.

Long-distance vSphere vMotion – Allows high network round-trip latency times between the source

and destination physical servers of up to 150 millisecond RTT. This technology allows for disaster

avoidance of much greater distances and further removes the constraints of the physical world from

virtual machines.

vMotion FCI node – Starting with vSphere 6, it is possible to use vSphere vMotion to migrate virtual

machines that are part of Microsoft failover clustering using a shared physical RDM disk.

These new vSphere vMotion capabilities further remove the limitations of the physical world allowing

customers to migrate SQL servers between physical servers, storage systems, networks, and even data

centers with no service disruption.

2.2.1.4. Site Recovery Manager

Site Recovery Manager is a business continuity and disaster recovery solution that helps you to plan,

test, and run the recovery of virtual machines between a protected site and a recovery site.

You can use Site Recovery Manager to implement different types of recovery from the protected site to

the recovery site.

Planned migration – The orderly evacuation of virtual machines from the protected site to the

recovery site. Planned migration prevents data loss when migrating workloads in an orderly fashion.

For planned migration to succeed, both sites must be running and fully functioning.

Disaster recovery – Like planned migration except that disaster recovery does not require that both

sites be up and running. For example, if the protected site goes offline unexpectedly. During a

disaster recovery operation, failure of operations on the protected site are reported but otherwise

ignored.

Site Recovery Manager orchestrates the recovery process with the replication mechanisms to minimize

data loss and system down time.

A recovery plan specifies the order in which virtual machines start up on the recovery site. A recovery

plan specifies network parameters, such as IP addresses, and can contain user-specified scripts that Site

Recovery Manager can run to perform custom recovery actions on virtual machines.

Site Recovery Manager lets you test recovery plans. You conduct tests by using a temporary copy of the

replicated data in a way that does not disrupt ongoing operations at either site.

For more details and best practices on vSphere business continuity capabilities, see the VMware vSphere

Availability guide at https://pubs.vmware.com/vsphere-60/topic/com.vmware.ICbase/PDF/vsphere-esxi-

vcenter-server-60-availability-guide.pdf.

B E S T P R A C T I C E S G U I D E / P A G E 11 O F 53

Architecting Microsoft SQL Server on VMware vSphere

For more details and best practices on Site Recovery Manager, see Section Error! Reference source

not found., Error! Reference source not found., and the Site Recovery Manager documentation at

https://pubs.vmware.com/srm-60/index.jsp.

2.2.2 Native SQL Server Capabilities

At the application level, all Microsoft features and techniques are supported on vSphere, including SQL

Server AlwaysOn Availability Groups, database mirroring, failover clustering, and log shipping. These

Microsoft features can be combined with vSphere features to create flexible availability and recovery

scenarios, applying the most efficient and appropriate tools for each use case.

The following table lists SQL Server availability options and their ability to meet various recovery time

objectives (RTO) and recovery point objectives (RPO). Before choosing any one option, evaluate your

own business requirements to determine which scenario best meets your specific needs.

Table 1. SQL Server 2012 High Availability Options

Technology

Granularity

Storage Type

RPO – Data Loss

RTO – Downtime

AlwaysOn Availability

Groups

Database

Non-shared

None (with

synchronous commit

mode)

~3 seconds or

Administrator recovery

AlwaysOn Failover

Cluster Instances

Instance

Shared

None

~30 seconds

Database Mirroring

Database

Non-shared

None (with high

safety mode)

< 3 seconds or

Administrator recovery

Log Shipping

Database

Non-shared

Possible transaction

log

Administrator recovery

For guidelines and information on the supported configuration for setting up any Microsoft clustering

technology on vSphere, including AlwaysOn Availability Groups, see the Knowledge Base article

Microsoft Clustering on VMware vSphere: Guidelines for supported configurations (1037959) at

http://kb.vmware.com/kb/1037959.

For a more detailed look at options requirements and how to plan mission critical deployments, see the

following guides:

http://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/solutions/sql-server-on-

vmware-availability-and-recovery-options.pdf

Planning a Highly Available, Mission Critical SQL Server Deployments with VMware vSphere

B E S T P R A C T I C E S G U I D E / P A G E 12 O F 53

Architecting Microsoft SQL Server on VMware vSphere

3. Best Practices for Deploying SQL Server Using vSphere

A properly designed virtualized SQL Server instance running in a VM with Windows Server or in v.Next

Linux, using vSphere is crucial to the successful implementation of enterprise applications. One main

difference between designing for performance of critical databases and designing for consolidation, which

is the traditional practice when virtualizing, is that when you design for performance you strive to reduce

resource contention between VMs as much as possible and even eliminate contention altogether. The

following sections outline VMware recommended practices for designing your vSphere environment to

optimize for best performance.

3.1 Rightsizing

Rightsizing is a term that means when deploying a VM, it is sized properly instead of adding too many,

which is a common sizing practice for physical servers. Rightsizing is imperative when sizing virtual

machines. For example, if the number of CPUs required for a newly designed database server is eight

CPUs, when deployed on a physical machine, the DBA typically asks for more CPU power than is

required at that time. This is because it is typically more difficult for the DBA to add CPUs to this physical

server after it is deployed. It is a similar situation for memory and other aspects of a physical deployment

– it is easier to build in capacity than try to adjust it later, which often requires additional cost and

downtime. This can also be problematic if a server started off as undersized and cannot handle the

workload it is supposed to run.

However, when sizing SQL Server deployments to run on a VM, it is important to assign that VM only the

exact amount of resources it requires at that time. This leads to optimized performance and the lowest

overhead, and is where licensing savings can be obtained with critical production SQL Server

virtualization. Subsequently, resources can be added non-disruptively, or with a short reboot of the VM.

To find out how many resources are required for the target SQL server VM, monitor the source physical

SQL server (if one exists) using dynamic management views (DMV)-based tools. There are two ways to

size the VM based on the requirements:

When an SQL server is considered critical with high performance requirements, take the most

sustained peak as the sizing baseline.

With lower tier SQL Server implementations, where consolidation takes higher priority than

performance, an average can be considered for the sizing baseline.

When in doubt, start with the lower amount of resources and grow as necessary.

After the VM has been created, adjustments can be made to its resource allocation from the original base

line. Adjustments can be based on additional monitoring using a DMV-based tool, similar to monitoring a

physical SQL Server deployments. VMware vRealize® Operations Manager™ can perform DMV-based

monitoring with ongoing capacity management and will alert if there is resource waste or contention

points.

Rightsizing and not over allocating resources is important for the following reasons:

Configuring a VM with more virtual CPUs than its workload can use might cause slightly increased

resource usage, potentially impacting performance on heavily loaded systems. Common examples of

this include a single-threaded workload running in a multiple-vCPU VM, or a multithreaded workload

in a virtual machine with more vCPUs than the workload can effectively use. Even if the guest

operating system does not use some of its vCPUs, configuring VMs with those vCPUs still imposes

some small resource requirements on ESXi that translate to real CPU consumption on the host.

Over-allocating memory also unnecessarily increases the VM memory overhead. While ESXi can

typically reclaim the over-allocated memory, it cannot reclaim the overhead associated with this over-

allocated memory, thus consuming memory that could otherwise be used to support more VMs.

B E S T P R A C T I C E S G U I D E / P A G E 13 O F 53

Architecting Microsoft SQL Server on VMware vSphere

Be careful when measuring the amount of memory consumed by a SQL Server VM with the VMware

Active Memory counter. Applications that contain their own memory management, such as SQL

Server, can skew this counter. Consult with the database administrator to confirm memory

consumption rates before adjusting the memory allocated to a SQL Server VM.

Having more vCPUs assigned for the virtual SQL Server also has licensing implications in certain

scenarios, such as per-core licenses.

Adding resources to VMs (a click of a button) is much easier than adding resources to physical

machines.

For more information about sizing for performance, see Performance Best Practices for VMware vSphere

6.0 at http://www.vmware.com/files/pdf/techpaper/VMware-PerfBest-Practices-vSphere6-0.pdf.

3.2 Host Configuration

3.2.1 BIOS/UEFI and Firmware Versions

As a best practice, update the BIOS/UEFI on the physical server that is running critical systems to the

latest version and make sure all the I/O devices have the latest supported firmware version.

3.2.2 BIOS/UEFI Settings

The following BIOS/UEFI settings are recommended for high performance environments (when

applicable):

Enable Turbo Boost

Enable hyper-threading

Verify that all ESXi hosts have NUMA enabled in the BIOS/UEFI. In some systems (for example, HP

Servers), NUMA is enabled by disabling node interleaving. Consult your server hardware vendor for

the applicable BIOS settings for this feature.

Enable advanced CPU features, such as VT-x/AMD-V, EPT, and RVI.

It is a good practice to disable any devices that are not used as serial ports.

Set Power Management (or its vendor-specific equivalent label) to “OS controlled”. This will enable

the ESXi hypervisor to control power management based on the selected policy. See the following

section for more information.

Disable all processor C-states (including the C1E halt state). These enhanced power management

schemes can introduce memory latency and sub-optimal CPU state changes (Halt-to-Full), resulting in

reduced performance for the VM.

3.2.3 Power Management

The ESXi hypervisor provides a high performance and competitive platform that efficiently runs many Tier

1 application workloads in VMs. By default, ESXi has been heavily tuned for driving high I/O throughput

efficiently by utilizing fewer CPU cycles and conserving power, as required by a wide range of workloads.

However, many applications require I/O latency to be minimized, even at the expense of higher CPU

utilization and greater power consumption.

VMware defines latency-sensitive applications as workloads that require optimizing for a few

microseconds to a few tens of microseconds end-to-end latencies. This does not apply to applications or

workloads in the hundreds of microseconds to tens of milliseconds end-to-end -latencies. In VMware

B E S T P R A C T I C E S G U I D E / P A G E 14 O F 53

Architecting Microsoft SQL Server on VMware vSphere

terms of network access times, SQL Server is not typically considered a “latency sensitive” application.

However, given the adverse impact of incorrect power settings in a Windows Server operating system,

customers must pay special attention to power management. See Best Practices for Performance Tuning

of Latency-Sensitive Workloads in vSphere VMs (http://www.vmware.com/files/pdf/techpaper/VMW-

Tuning-Latency-Sensitive-Workloads.pdf).

Server hardware and operating systems are usually engineered to minimize power consumption for

economic reasons. Windows Server and the ESXi hypervisor both favor minimized power consumption

over performance. While previous versions of ESXi default to “high performance” power schemes,

vSphere 5.0 and later defaults to a “balanced” power scheme. For critical applications, such as SQL

Server, the default power scheme in vSphere 6.0 is not recommended.

There are three distinct areas of power management in an ESXI hypervisor virtual environment: server

hardware, hypervisor, and guest operating system. The following section provides power management

and power setting recommendations covering all of these areas.

3.2.3.1. ESXi Host Power Settings

An ESXi host can take advantage of several power management features that the hardware provides to

adjust the trade-off between performance and power use. You can control how ESXi uses these features

by selecting a power management policy.

In general, selecting a high-performance policy provides more absolute performance, but at lower

efficiency (performance per watt). Lower-power policies provide lower absolute performance, but at

higher efficiency. ESXi provides five power management policies. If the host does not support power

management, or if the BIOS/UEFI settings specify that the host operating system is not allowed to

manage power, only the “Not Supported” policy is available.

Table 2. CPU Power Management Policies

Power Management

Policy

Description

High Performance

The VMkernel detects certain power management features, but will not

use them unless the BIOS requests them for power capping or thermal

events. This is the recommended power policy for an SQL Server

running on ESXi.

Balanced (default)

The VMkernel uses the available power management features

conservatively to reduce host energy consumption with minimal

compromise to performance.

Low Power

The VMkernel aggressively uses available power management

features to reduce host energy consumption at the risk of lower

performance.

Custom

The VMkernel bases its power management policy on the values of

several advanced configuration parameters. You can set these

parameters in the VMware vSphere Web Client Advanced Settings

dialog box.

Not supported

The host does not support any power management features, or power

management is not enabled in the BIOS.

B E S T P R A C T I C E S G U I D E / P A G E 15 O F 53

Architecting Microsoft SQL Server on VMware vSphere

VMware recommends setting the high performance ESXi host power policy for critical SQL Server VMs.

You select a policy for a host using the vSphere Web Client. If you do not select a policy, ESXi uses

Balanced by default.

Figure 3. Recommended ESXi Host Power Management Setting

When a CPU runs at lower frequency, it can also run at lower voltage, which saves power. This type of

power management is called dynamic voltage and frequency scaling (DVFS). ESXi attempts to adjust

CPU frequencies so that VM performance is not affected.

When a CPU is idle, ESXi can take advantage of deep halt states (known as C-states). The deeper the C-

state, the less power the CPU uses, but the longer it takes for the CPU to resume running. When a CPU

becomes idle, ESXi applies an algorithm to predict how long it will be in an idle state and chooses an

appropriate C state to enter. In power management policies that do not use deep C-states, ESXi uses

only the shallowest halt state (C1) for idle CPUs.

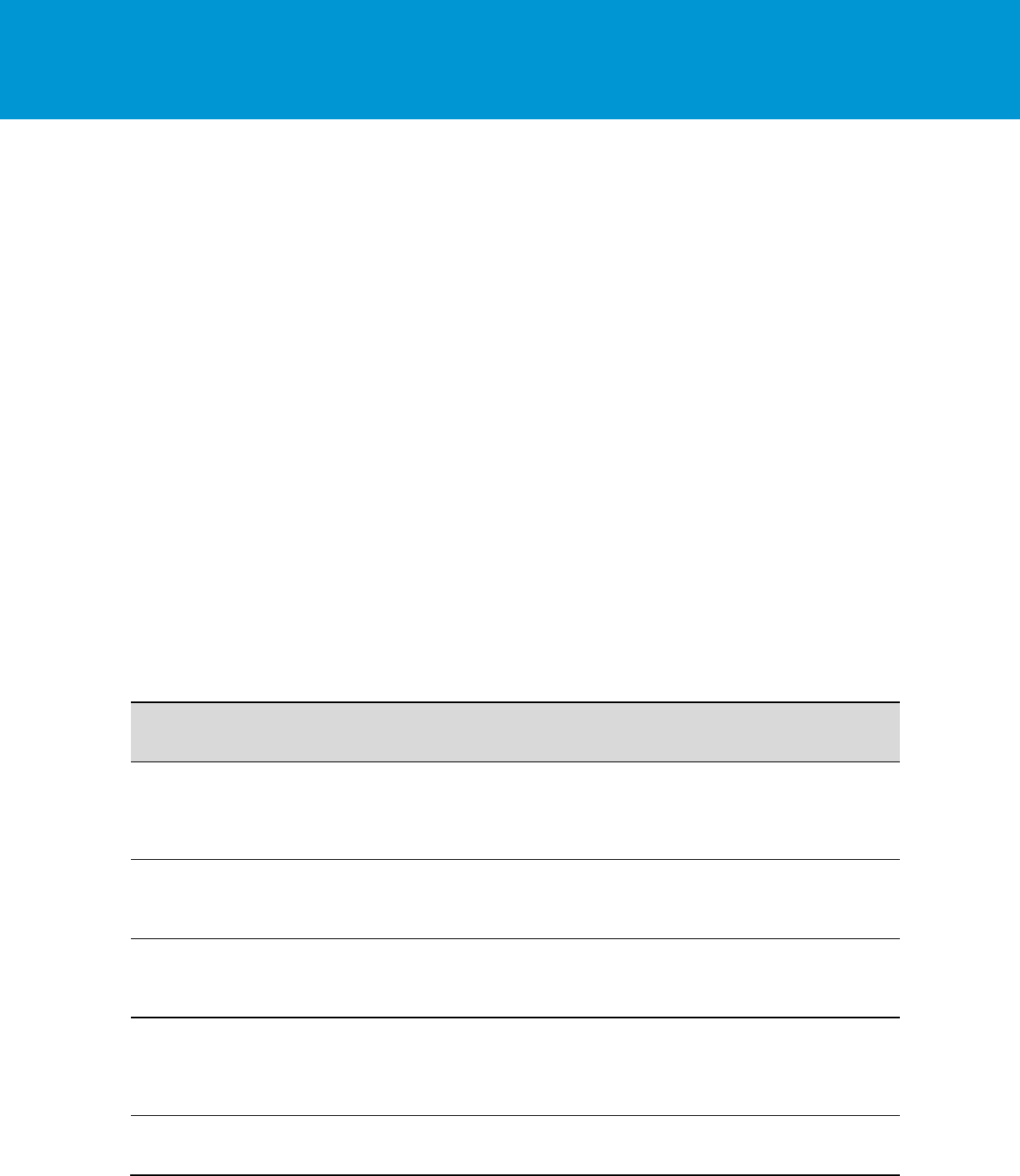

3.2.3.2. Windows Guest Power Settings

The default power policy option in Windows Server 2016 is “Balanced”. This configuration allows

Windows Server OS to save power consumption by periodically throttling power to the CPU and turning

off devices such as the network cards in the guest when Windows Server determines that they are idle or

unused. This capability is inefficient for critical SQL Server workloads due to the latency and disruption

introduced by the act of powering-off and powering-on CPUs and devices. Allowing Windows Server to

throttle CPUs can result in what Microsoft describes as core parking and should be avoided. For more

information, see Server Hardware Power Considerations at https://msdn.microsoft.com/en-

us/library/dn567635.aspx.

B E S T P R A C T I C E S G U I D E / P A G E 16 O F 53

Architecting Microsoft SQL Server on VMware vSphere

Figure 4. Windows Server CPU Core Parking

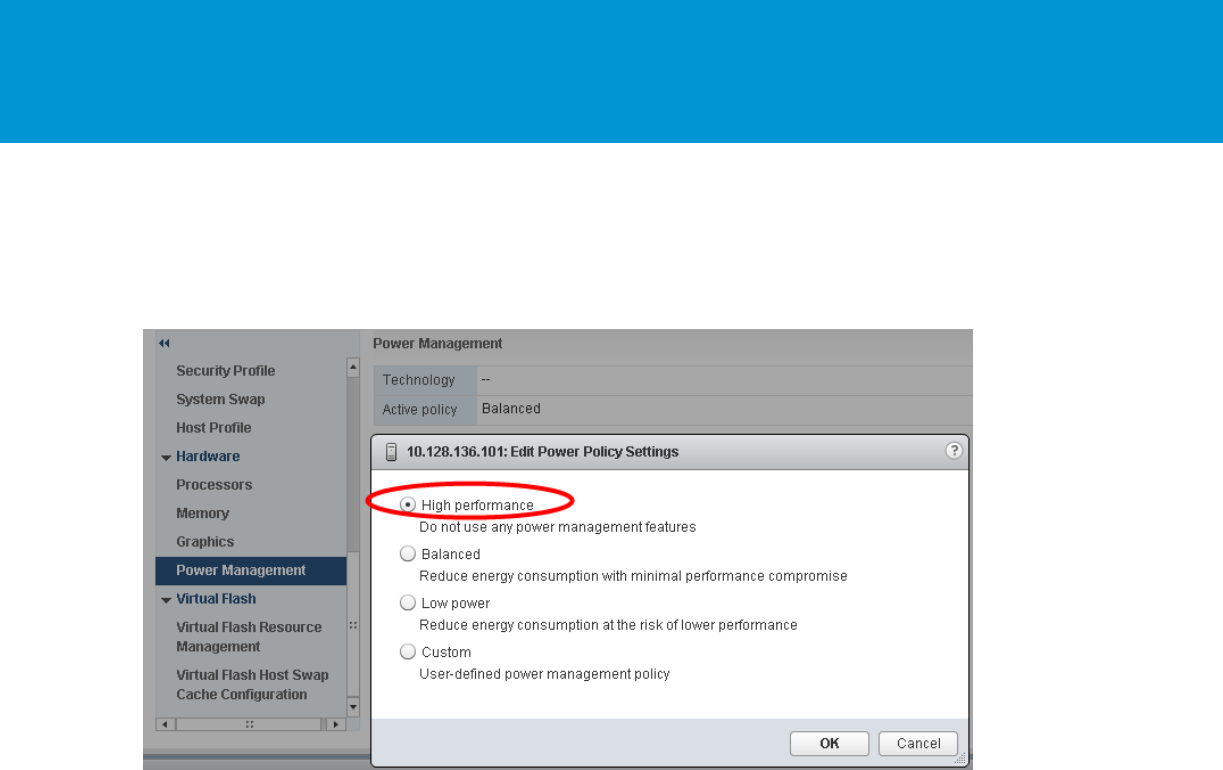

Microsoft recommends the high-performance power management policy for applications requiring stability

and performance. VMware supports this recommendation and encourages customers to incorporate it

into their SQL Server tuning and administration practice for virtualized deployment.

Figure 5. Recommended Windows Guest Power Scheme

3.3 CPU Configuration

3.3.1 Physical, Virtual, and Logical CPUs and Cores

VMware uses the terms virtual CPU (vCPU) and physical CPU (pCPU), and virtual core (vCore) and

physical core (pCore) to distinguish between the processors within the VM and the underlying physical

x86/x64-based processor cores. VMs with more than one vCPU are called symmetric multiprocessing

(SMP) virtual machines. Other terms that can be used are logical CPU, which refers to Hyper-threading,

virtual cores, and physical cores.

B E S T P R A C T I C E S G U I D E / P A G E 17 O F 53

Architecting Microsoft SQL Server on VMware vSphere

3.3.2 Allocating vCPU to SQL Server Virtual Machines

When performance is the highest priority of the SQL Server design, VMware recommends that, for the

initial sizing, the total number of vCPUs assigned to all the VMs be no more than the total number of

physical cores (rather than the logical cores) available on the ESXi host machine. By following this

guideline, you can gauge performance and utilization within the environment until you can identify

potential excess capacity that could be used for additional workloads. For example, if the physical server

that the SQL Server workloads run on has 16 physical CPU cores, avoid allocating more than 16 virtual

vCPUs for the VMs on that vSphere host during the initial virtualization effort. This initial conservative

sizing approach helps rule out CPU resource contention as a possible contributing factor in the event of

sub-optimal performance during and after the virtualization project. After you have determined that there

is excess capacity to be used, you can increase density in that physical server by adding more workloads

into the vSphere cluster and allocating virtual vCPUs beyond the available physical cores.

Lower-tier SQL Server workloads typically are less latency sensitive, so in general the goal is to maximize

use of system resources and achieve higher consolidation ratios rather than maximize performance.

The vSphere CPU scheduler’s policy is tuned to balance between maximum throughput and fairness

between VMs. For lower-tier databases, a reasonable CPU overcommitment can increase overall system

throughput, maximize license savings, and continue to maintain adequate performance.

3.3.3 Hyper-threading

Hyper-threading is an Intel technology that exposes two hardware contexts (threads) from a single

physical core, also referred to as logical CPUs. This is not the same as having twice the number of CPUs

or cores. By keeping the processor pipeline busier and allowing the hypervisor to have more CPU

scheduling opportunities, Hyper-threading generally improves the overall host throughput anywhere from

10 to 30 percent.

VMware recommends enabling Hyper-threading in the BIOS/UEFI so that ESXi can take advantage of

this technology. ESXi makes conscious CPU management decisions regarding mapping vCPUs to

physical cores, taking Hyper-threading into account. An example is a VM with four virtual CPUs. Each

vCPU will be mapped to a different physical core and not to two logical threads that are part of the same

physical core.

Hyper-threading can be controlled on a per VM basis in the hyper-threading Sharing section on the

Properties tab of a VM. This setting provides control of whether a VM should be scheduled to share a

physical core if Hyper-threading is enabled on the host.

Any – This is the default setting. The vCPUs of this VM can freely share cores with other virtual CPUs of

this or other virtual machines. VMware recommends leaving this setting to allow the CPU scheduler the

maximum scheduling opportunities.

None – The vCPUs of this VM have exclusive use of a processor whenever they are scheduled to the

core. Selecting None in effect disables Hyper-threading for your VM.

Internal – This option is similar to None. vCPUs from this VM cannot share cores with vCPUs from other

VMs. They can share cores with the other vCPUs from the same VM.

See additional information about Hyper-threading on a vSphere host in VMware vSphere Resource

Management (https://pubs.vmware.com/vsphere-60/topic/com.vmware.ICbase/PDF/vsphere-esxi-vcenter-

server-60-resource-management-guide.pdf ).

It is important to remember to account for the differences between a processor thread and a physical

CPU/core during capacity planning for your SQL Server deployment.

B E S T P R A C T I C E S G U I D E / P A G E 18 O F 53

Architecting Microsoft SQL Server on VMware vSphere

3.3.4 NUMA Consideration

vSphere supports non-uniform memory access (NUMA) systems. In a NUMA system, there are multiple

NUMA nodes that consist of a set of processors and the memory. The access to memory in the same

node is local. The access to the other node is remote. The remote access takes more cycles because it

involves a multi-hop operation. Due to this asymmetric memory access latency, keeping the memory

access local or maximizing the memory locality improves performance. On the other hand, CPU load

balancing across NUMA nodes is also crucial to performance. The CPU scheduler achieves both aspects

of performance.

The intelligent, adaptive NUMA scheduling and memory placement policies in vSphere can manage all

VMs transparently, so administrators do not have to deal with the complexity of balancing VMs between

nodes by hand. To reduce memory access latency, consider the following information.

For small VMs running SQL Server, allocate vCPUs equal to or less than the number of cores in each

physical NUMA node. When you do this, the guest operating system or SQL Server does not need to

consider NUMA because ESXi makes sure memory accesses are as local as possible. In the following

example, there is a VM with 6 vCPUs and 32 GB of RAM residing in a server that has 8 cores and 96 GB

of RAM in each NUMA node, with a total of 16 CPU cores and 192 GB RAM. The ESXi server places the

VM entirely in one NUMA node, making sure all processes stay local and performance is optimized.

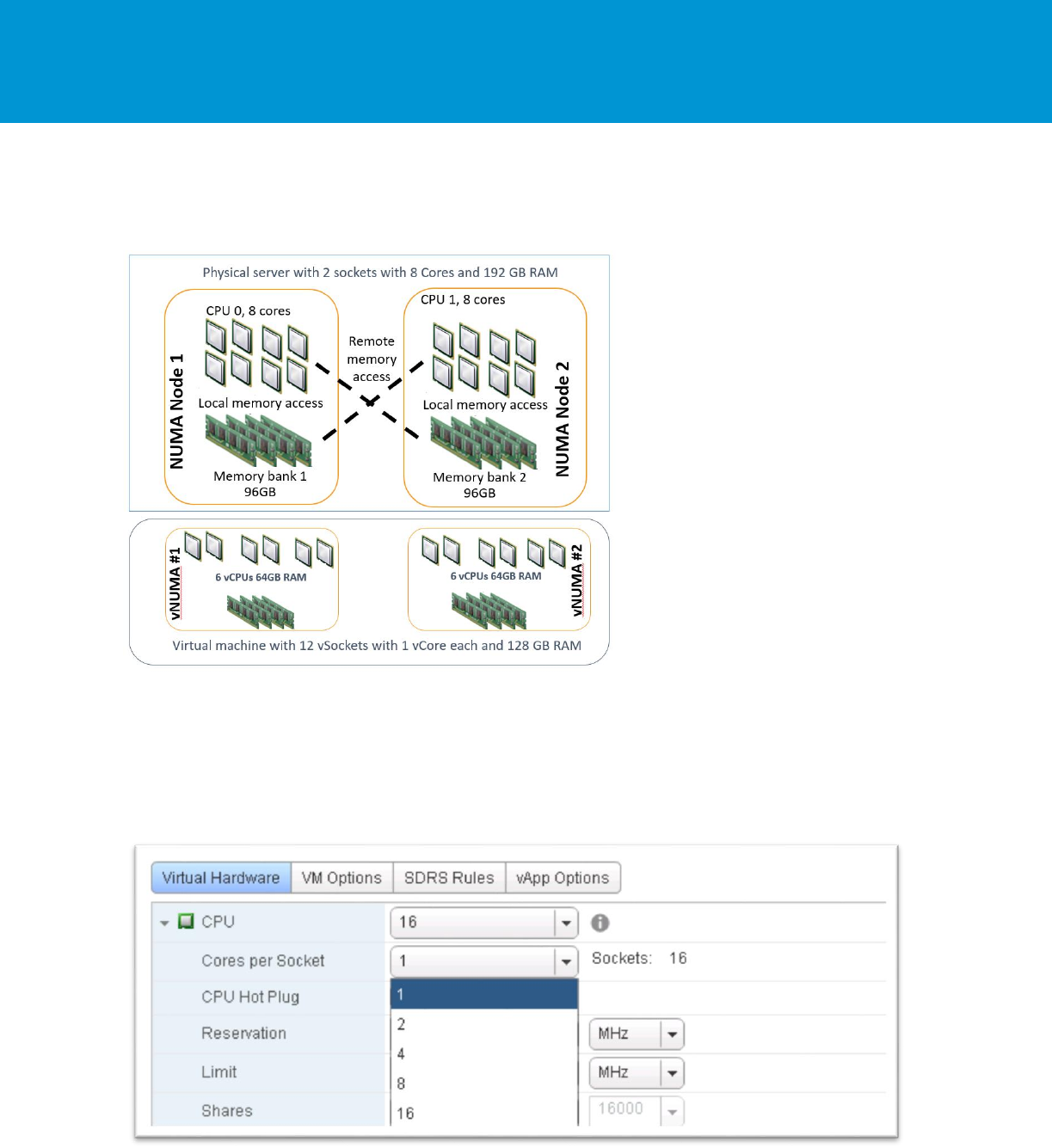

Figure 6. An Example of a VM with NUMA Locality

For wide SQL Server virtual machines, where the number of allocated vCPUs is greater than the number

of cores in the NUMA node, ESXi divides the CPU and memory of the VM into two or more virtual NUMA

nodes and places each vNUMA on a different physical NUMA node. The vNUMA topology is exposed to

the guest OS and SQL Server to take advantage of memory locality.

In the following example, there is a single VM with 12 vCPUs and 128 GB of RAM residing on the same

physical server that has 8 cores and 96 GB of RAM in each NUMA node, with a total of 16 CPU cores

and 192 GB RAM. The VM will be created as a wide VM with a vNUMA architecture that is exposed to the

underling guest server OS.

B E S T P R A C T I C E S G U I D E / P A G E 19 O F 53

Architecting Microsoft SQL Server on VMware vSphere

Note By default, vNUMA is enabled only for a VM with nine or more vCPUs.

Figure 7. An Example of a VM with vNUMA

3.3.5 Cores per Socket

It is possible to assign multiple virtual cores (vCores) per virtual sockets (vSockets) to a VM. You can do

this by setting the Cores per sockets setting to more than one in the VM configuration editor.

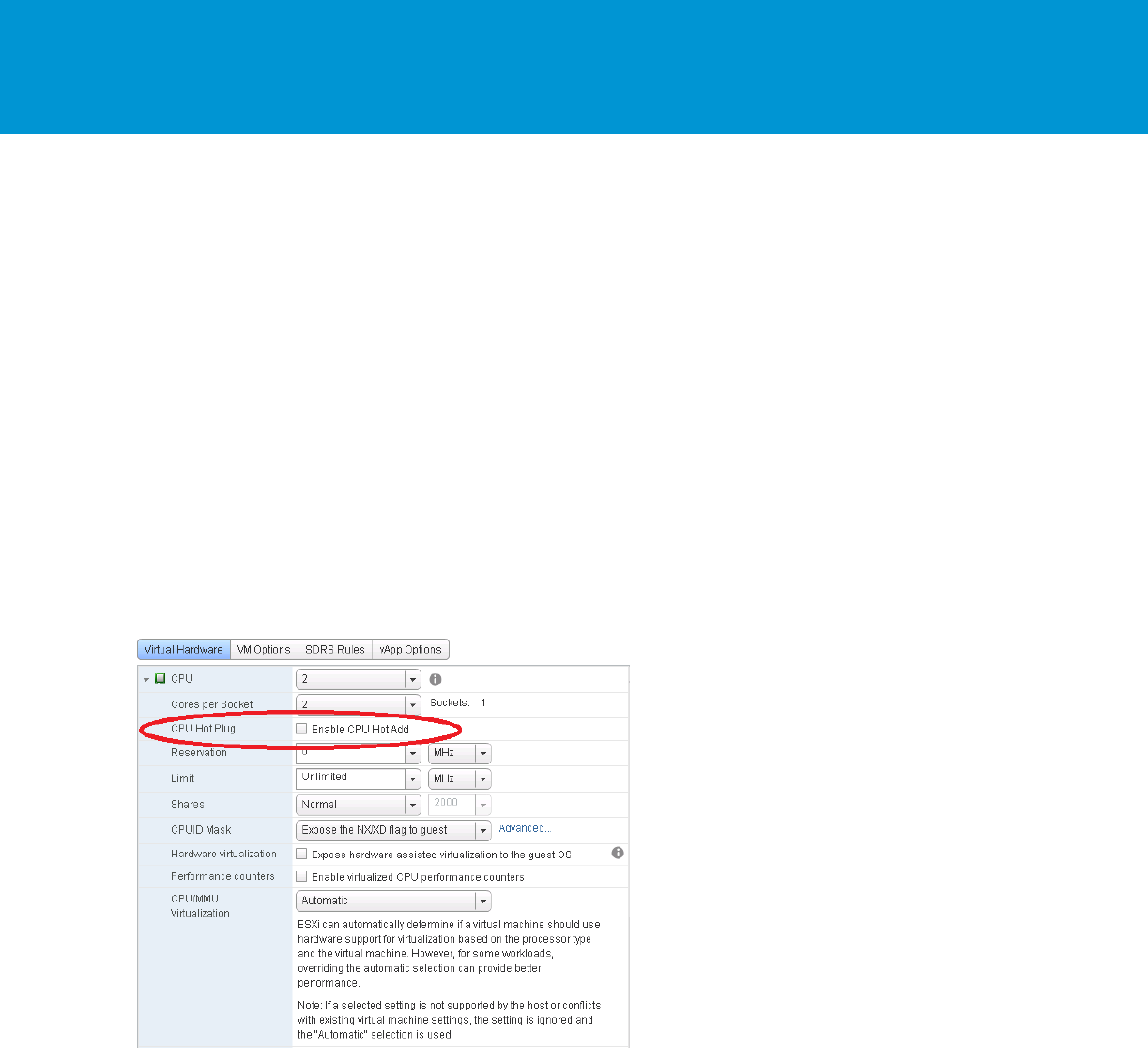

Figure 8. Cores per Sockets

For example, a VM can have 4 vCPUs (sockets) each with 4 vCores, or it can have 2 vCPUs each with 8

vCores. Both options result in the VM having 16 vCores that are mapped to 16 pCores or logical Hyper-

B E S T P R A C T I C E S G U I D E / P A G E 20 O F 53

Architecting Microsoft SQL Server on VMware vSphere

threads. This advanced setting was created to assist with licensing limitations for certain applications and

operating systems that limit the number of cores and sockets. This is very useful for virtualized SQL

Server deployments due to SQL Server’s upper limits on the number of allowed CPU sockets and cores.

The limits are different for the versions and editions of the software as detailed in the Microsoft article

Compute Capacity Limits by Edition of SQL Server https://msdn.microsoft.com/en-

us/library/ms143760(v=sql.130).aspx .

In the preceding example, if this VM is a SQL Server 2014 Standard Edition, it is limited to the lesser 4

sockets or 16 cores. Anything more than 4 sockets and 16 cores is not recognized by SQL Server. To

maximize the compute allocation to the VM, either 4 vSockets and 4 vCores each (4x4), 2 vSockets and 8

vCores each (2x8), or 1 vSocket and 16 vCores (1x16) assigns access to 16 pCores to the VM.

Changing the number of vCores per vSockets has implications on the vNUMA topology and must be done

with care. In the following examples, it is assumed that there is a physical server with 2 physical CPU

sockets and 12 cores in each physical NUMA node:

When the VM running SQL Server is assigned fewer CPU cores than the number cores in the

physical NUMA node, any configuration of cores per socket is acceptable because even though

vNUMA will be enabled with more than 9 vCPUs by default only one vNUMA node will be configured

for the VM. In the preceding example of a 2x12 physical server, both a 2x4 or a 1x8 VM

configurations will not affect vNUMA because both are lower than the physical cores per physical

NUMA node (8<12). Generally, when the VM CPU count is lower than the physical NUMA node size,

try to use the fewest number of vSockets possible.

For configuration with more vCPUs than physical cores on the physical NUMA, such as 16 vCPU VM

on a 2x12 host, always assign a number of vCPUs that can be divided evenly between physical

NUMA nodes. Do not assign an odd number of vCPUs as that can result in a sub-optimal

configuration. Also, the following considerations needs to be taken into account:

o Prior to vSphere 6.5, the cores per socket configuration directly affected the vNUMA topology of

the VM. Because of that, you must be aware of the underlying physical NUMA topology when

configuring the “cores per socket.” For example, assuming a requirement of 16 CPUs for a SQL

Server 2014 Standard VM, it will not be able to take advantage of all the vCPUs assigned to it if it

is configured with 1 core per socket (16 vSockets > limit of 4 sockets). In the example of a

physical server with 2x12, a VM with either 4x4 or 2x8 configuration is acceptable because it

allows ESXi to place each of the vNUMA nodes within a physical NUMA node.

o Starting with vSphere 6.5 the number of cores per socket does not affect the vNUMA

configuration by default. That means that any cores per sockets configuration can be set, and

ESXi always tries to create the optimized vNUMA configuration in the backend.

A few things to note:

A VM that was upgraded from earlier versions of vSphere than 6.5 has the following advanced

setting:

numa.vcpu.followcorespersocket = 1 set

This setting forces the old vNUMA behavior, which respects the cores per socket for vNUMA

topology. This is done for backward compatibility. To make the VM to correspond to the new

behavior, change this setting to 0.

Memory size is not considered for vNUMA topology, only the number of CPUs, even if the amount of

RAM assigned to the VM is more than the physical memory in each physical NUMA node. A VM with

more memory than the physical NUMA node, but with fewer CPU cores than the physical NUMA

node, forces memory to be fetched remotely, degrading performance. If there is a need for more

memory than the physical NUMA, then it is best to force the hypervisor to create a vNUMA topology

by either assigning more cores than the physical NUMA configuration (and more than 9 by default), or

B E S T P R A C T I C E S G U I D E / P A G E 21 O F 53

Architecting Microsoft SQL Server on VMware vSphere

by lowering the advanced setting numa.vcpu.min from 9 to the number of CPUs assigned to the

virtual machine.

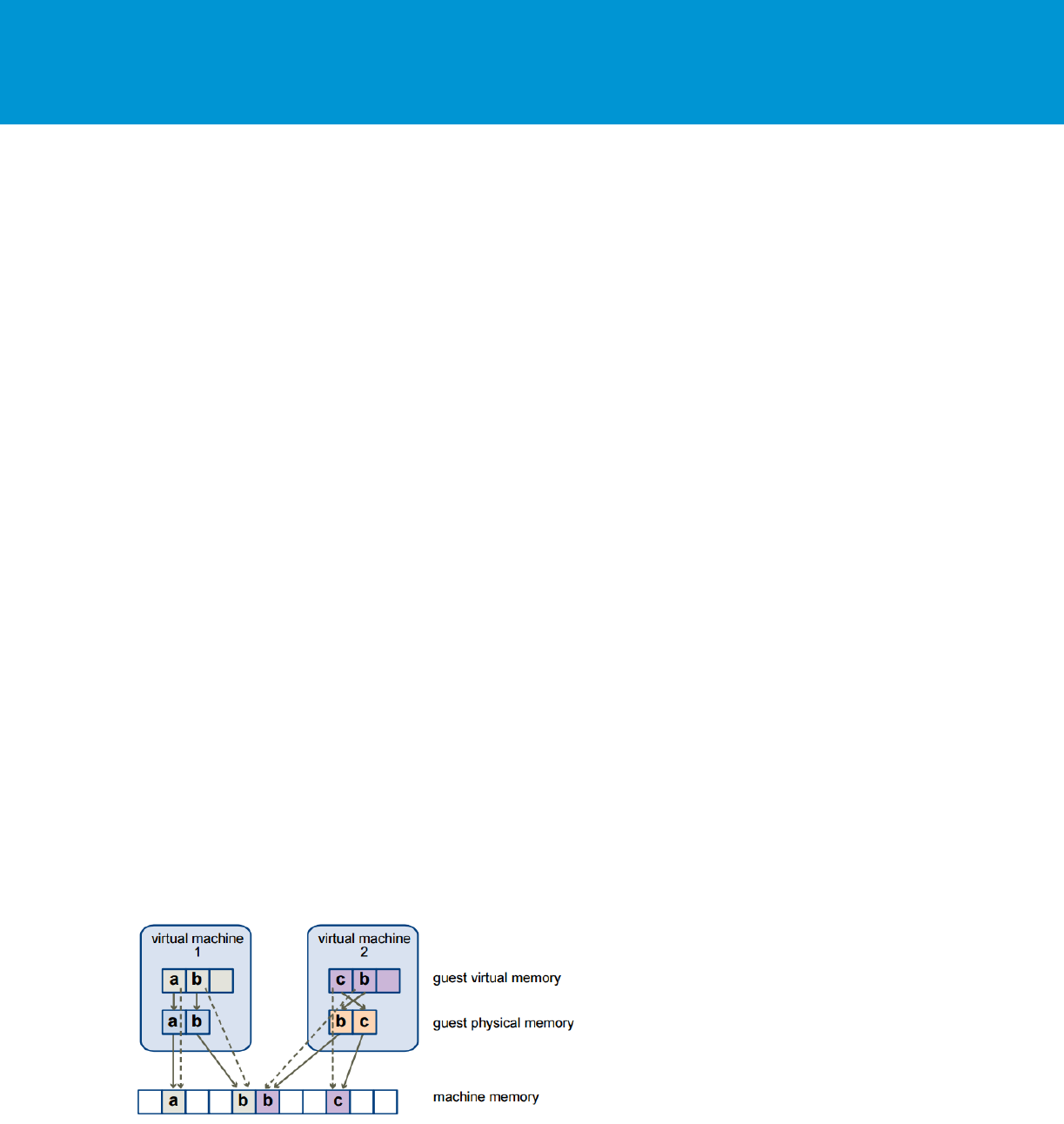

3.3.6 CPU Hot Plug

CPU hot plug is a feature that enables the VM administrator to add CPUs to the VM without having to

power it off. This allows adding CPU resources “on the fly” with no disruption to service. When CPU hot

plug is enabled on a VM, the vNUMA capability is disabled.

This means that SQL Server inside the VM cannot utilize vNUMA and will have degraded performance

because the NUMA architecture exceeds that of the underlying physical server.

There is a use case for using hot plug especially for dynamic resource management and the ability to

dynamically add CPUs when vNUMA is not required (which is usually because of smaller VMs).

Therefore, VMware recommends to not enable CPU hot plug by default, especially for VMs that require

vNUMA. Rightsizing the VM’s CPU is always a better choice than relying on CPU hot plug. The decision

whether to use this feature should be made on a case-by-case basis and not implemented in the VM

template used to deploy SQL. See the Knowledge Base article, vNUMA is disabled if VCPU hotplug is

enabled (2040375) at http://kb.vmware.com/kb/2040375.

Figure 9. Enabling CPU Hot Plug

3.3.7 CPU Affinity

CPU affinity restricts the assignment of a VM’s vCPUs to a subset of the available physical cores on the

physical server on which the VM resides.

VMware recommends not using CPU affinity in production because it limits the hypervisor’s ability to

efficiently schedule vCPUs on the physical server.

3.3.8 Virtual Machine Encryption

VM encryption enables encryption of the VM’s I/Os before they are stored in the virtual disk file. Because

VMs save their data in files, one of the concerns starting from the earliest days of virtualization, is that

data can be accessed by an unauthorized entity, or stolen by taking the VM’s disk files form the storage.

B E S T P R A C T I C E S G U I D E / P A G E 22 O F 53

Architecting Microsoft SQL Server on VMware vSphere

VM encryption is controlled on a per VM basis, and is implemented in the virtual vSCSI layer using and

IOFilter API. This framework is implemented entirely in user space, which allows the I/Os to be isolated

cleanly from the core architecture of the hypervisor.

VM encryption does not impose any specific hardware requirements, and using a processor that supports

the AES-NI instruction set speeds up the encryption/decryption operation.

Any encryption feature consumes CPU cycles, and any I/O filtering mechanism consumes at least

minimal I/O latency overhead.

The impact of such overheads largely depends on two aspects:

The efficiency of implementation of the feature/algorithm.

The capability of the underlying storage.

If the storage is slow (such as in a locally attached spinning drive), the overhead caused by I/O filtering is

minimal, and has little impact on the overall I/O latency and throughput. However, if the underlying

storage is very high performance, any overhead added by the filtering layers can have a non-trivial impact

on I/O latency and throughput. This impact can be minimized by using processors that support the AES-

NI instruction set.

For the latest performance study of VM encryption, see the following paper:

http://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/techpaper/vm-encryption-

vsphere65-perf.pdf.

3.4 Memory Configuration

One of the most critical system resources for SQL Server is memory. Lack of memory resources for the

SQL Server database engine will induce Windows Server to page memory to disk, resulting in increased

disk I/O activities, which are considerably slower than accessing memory.

When a SQL Server deployment is virtualized, the hypervisor performs virtual memory management

without the knowledge of the guest OS and without interfering with the guest operating system’s own

memory management subsystem.

The guest OS, which is Windows Server in the case of SQL Server through SQL Server 2016, sees a

contiguous, zero-based, addressable physical memory space. The underlying machine memory on the

server used by each VM is not necessarily contiguous.

Figure 10. Memory Mappings Between Virtual, Guest, and Physical Memory

B E S T P R A C T I C E S G U I D E / P A G E 23 O F 53

Architecting Microsoft SQL Server on VMware vSphere

3.4.1 Memory Sizing Considerations

Memory sizing considerations include the following:

When designing for performance to prevent memory contention between VMs, avoid overcommitment

of memory at the ESXi host level (HostMem >= Sum of VMs memory – overhead). That means that if

a physical server has 256 GB of RAM, do not allocate more than that amount to the virtual machines

residing on it taking memory overhead into consideration as well.

Similar to CPU NUMA consideration, with Microsoft SQL Server, NUMA is less of a concern because

both vSphere and SQL Server support NUMA. As with SQL Server, vSphere has intelligent, adaptive

NUMA scheduling and memory placement policies that can manage all VMs transparently. If the VMs

memory is sized less than the amount available per NUMA node, ESXi will avoid remote memory

access as much as possible. However, if the VM is sized larger than the NUMA node memory size,

NUMA can be exposed to the underlying Windows Server guest OS with vNUMA allowing SQL

Server to take advantage of NUMA.

VMs require a certain amount of available overhead memory to power on. You should be aware of

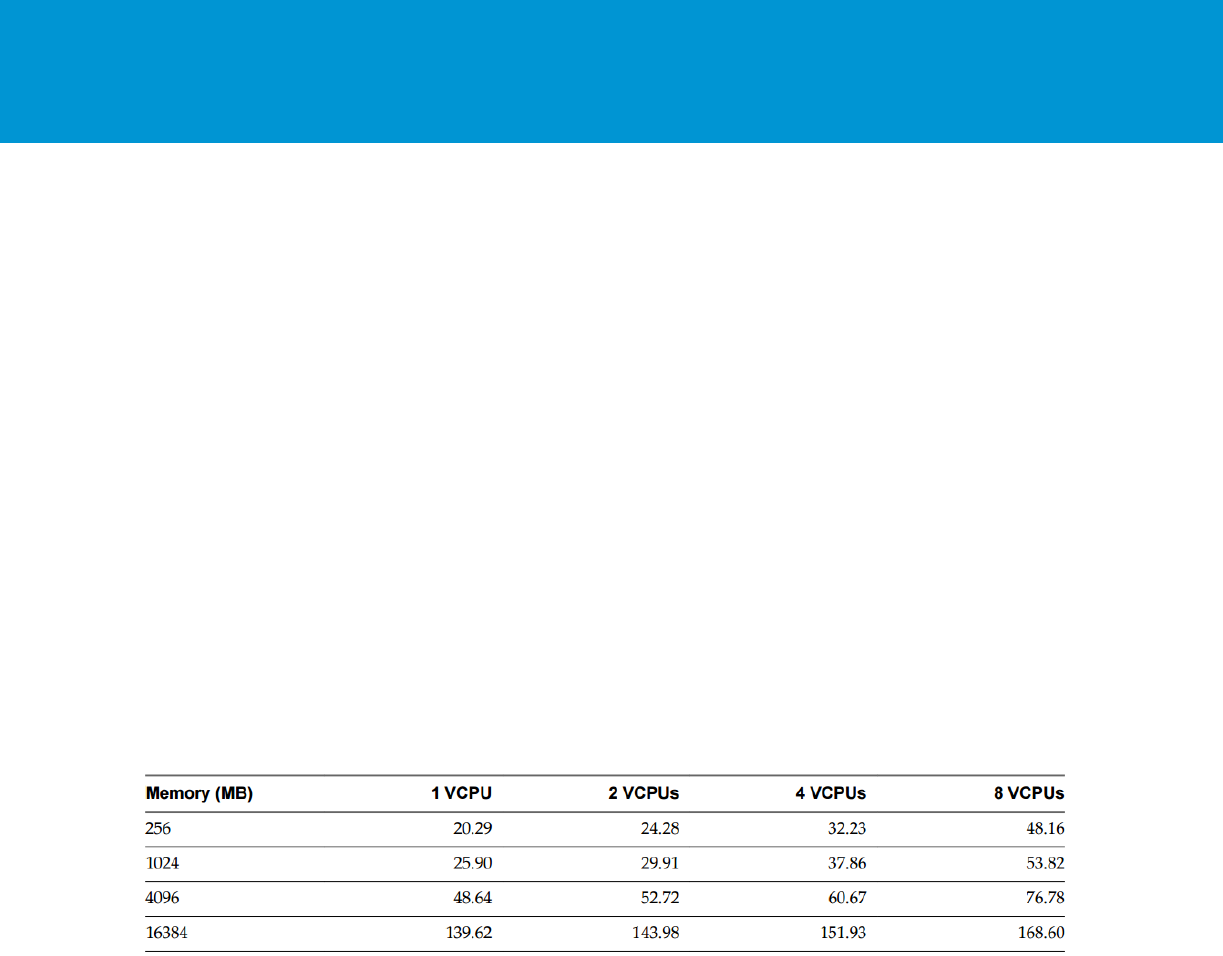

the amount of this overhead. The following table lists the amount of overhead memory a VM requires

to power on. After a VM is running, the amount of overhead memory it uses might differ from the

amount listed in the following snapshot.

This snapshot provides a sample of overhead memory values and does not apply to all possible

configurations. You can configure a VM to have up to 128 vCPUs and up to 4 TB of memory.

Figure 11. Sample Overhead Memory on Virtual Machines

For additional details, refer to vSphere Resource Management (https://pubs.vmware.com/vsphere-

60/topic/com.vmware.ICbase/PDF/vsphere-esxi-vcenter-server-60-resource-management-guide.pdf).

B E S T P R A C T I C E S G U I D E / P A G E 24 O F 53

Architecting Microsoft SQL Server on VMware vSphere

3.4.2 Memory Reservation

When achieving adequate performance is the primary goal, consider setting the memory reservation

equal to the provisioned memory. This will eliminate the possibility of ballooning or swapping from

happening and will guarantee that the VM gets only physical memory. When calculating the amount of

memory to provision for the VM, use the following formulas:

VM Memory = SQL Max Server Memory + ThreadStack + OS Mem + VM Overhead

ThreadStack = SQL Max Worker Threads * ThreadStackSize

ThreadStackSize = 1MB on x86

= 2MB on x64

= 4MB on IA64

OS Mem: 1GB for every 4 CPU Cores

Use SQL Server memory performance metrics and work with your database administrator to determine

the SQL Server maximum server memory size and maximum number of worker threads. Refer to the VM

overhead table for VM overhead.

Figure 12. Setting Memory Reservation

Note Setting memory reservations might limit vSphere vMotion. A VM can be migrated only if the target

ESXi host has unreserved memory equal to or greater than the size of the reservation.

3.4.3 The Balloon Driver

The ESXi hypervisor is not aware of the guest Windows Server memory management tables of used and

free memory. When the VM is asking for memory from the hypervisor, the ESXi will assign a physical

memory page to accommodate that request. When the guest OS stops using that page, it will release it

by writing it in the operating system’s free memory table, but will not delete the actual data from the page.

The ESXi hypervisor does not have access to the operating system’s free and used tables, and from the

hypervisor’s point of view, that memory page might still be in use. In case there is memory pressure on

the hypervisor host, and the hypervisor requires reclaiming some memory from VMs, it will utilize the

balloon driver. The balloon driver, which was is installed with VMware Tools™, will request a large

amount of memory to be allocated from the guest OS. The guest OS will release memory from the free list

or memory that has been idle. That way, memory is paged to disk based on the OS algorithm and

requirements and not the hypervisor. Memory will be reclaimed from VMs that have less proportional

shares and will be given to the VMs with more proportional shares. This is an intelligent way for the

hypervisor to reclaim memory from VMs based on a preconfigured policy called the proportional share

mechanism.

B E S T P R A C T I C E S G U I D E / P A G E 25 O F 53

Architecting Microsoft SQL Server on VMware vSphere

When designing SQL Server for performance, the goal is to eliminate any chance of paging from

happening. Disable the ability for the hypervisor to reclaim memory from the guest OS by setting the

memory reservation of the VM to the size of the provisioned memory. The recommendation is to leave the

balloon driver installed for corner cases where it might be needed to prevent loss of service. As an

example of when the balloon driver might be needed, assume a vSphere cluster of 16 physical hosts that

is designed for a 2-host failure. In case of a power outage that causes a failure of 4 hosts, the cluster

might not have the required resources to power on the failed VMs. In that case, the balloon driver can

reclaim memory by forcing the guest operating systems to page, allowing the important database servers

to continue running in the least disruptive way to the business.

Note Ballooning is sometimes confused with Microsoft’s Hyper-V dynamic memory feature. The two

are not the same and Microsoft recommendations to disable dynamic memory for SQL Server

deployments do not apply for the VMware balloon driver.

3.4.4 Memory Hot Plug

Similar to CPU hot plug, memory hot plug enables a VM administrator to add memory to the VM with no

down time. Before vSphere 6, when memory hot add was configured on a VM with vNUMA enabled, it

would always add it to node0, creating NUMA imbalance. With vSphere 6 and later, when enabling

memory hot plug and adding memory to the VM, the memory will be added evenly to both vNUMA nodes

which makes this feature usable for more use cases. VMware recommends using memory hot plug in

cases where memory consumption patterns cannot be easily and accurately predicted only with vSphere

6 and later. After memory has been added to the VM, increase the max memory setting on the database

if one has been set. This can be done without requiring a server reboot or a restart of the SQL Server

service. As with CPU hot plug, it is preferable to rely on rightsizing than on memory hot plug. The decision

whether to use this feature should be made on a case-by-case basis and not implemented in the VM

template used to deploy SQL Server.

Figure 13. Setting Memory Hot Plug

3.5 Storage Configuration

Physical SQL Server environments use resources that are isolated and not shared. When you move to

virtualized SQL Server deployments, a shared storage model strategy provides many benefits, such as

more effective storage resource utilization, reduced storage white space, better provisioning, and

improved mobility using vSphere vMotion and vSphere Storage vMotion. However, note that some things

are different under virtualization, such as needing to be sure that one data store is not a single point of

failure and that the storage data stores can be shared between multiple SQL Serve deployments.

Storage configuration is critical to any successful database deployment, especially in virtual environments

where you might consolidate multiple SQL Server VMs on a single ESXi host. Your storage subsystem

B E S T P R A C T I C E S G U I D E / P A G E 26 O F 53

Architecting Microsoft SQL Server on VMware vSphere

must provide sufficient I/O throughput as well as storage capacity to accommodate the cumulative needs

of all VMs running on your ESXi hosts.

For information about best practices for SQL Server storage configuration, refer to Microsoft’s Storage

Top Ten Practices (http://technet.microsoft.com/en-us/library/cc966534.aspx). Follow these

recommendations along with the best practices in this guide.

3.5.1 vSphere Storage Options

vSphere provides several options for storage configuration. The one that is the most widely used is a

VMFS formatted datastore on central storage system, but that is not the only option. Today, storage

admins can utilize new technologies such as VMware vSphere Virtual Volumes™ which takes storage

management to the next level, where VMs are native objects on the storage system. Other options

include hyper-converged solutions, such as VMware vSAN™ and/or all flash arrays, such as EMC’s

XtremIO. This section covers the different storage options that exist for virtualized SQL Server

deployments running on vSphere.

3.5.1.1. VMFS on Central Storage Subsystem

This is the most commonly used option today among VMware customers. As illustrated in the following

figure, the storage array is at the bottom layer, consisting of physical disks presented as logical disks

(storage array volumes or LUNs) to vSphere. This is the same as in the physical deployment. The storage

array LUNs are then formatted as VMFS volumes by the ESXi hypervisor and that is where the virtual

disks reside. These virtual disks are then presented to the guest OS.

Figure 14. VMware Storage Virtualization Stack

B E S T P R A C T I C E S G U I D E / P A G E 27 O F 53

Architecting Microsoft SQL Server on VMware vSphere

3.5.1.2. VMware Virtual Machine File System (VMFS)

VMFS is a clustered file system that provides storage virtualization optimized for VMs. Each VM is

encapsulated in a small set of files and VMFS is the default storage system for these files on physical

SCSI based disks and partitions. VMware supports Fiber Channel and iSCSI protocols for VMFS.

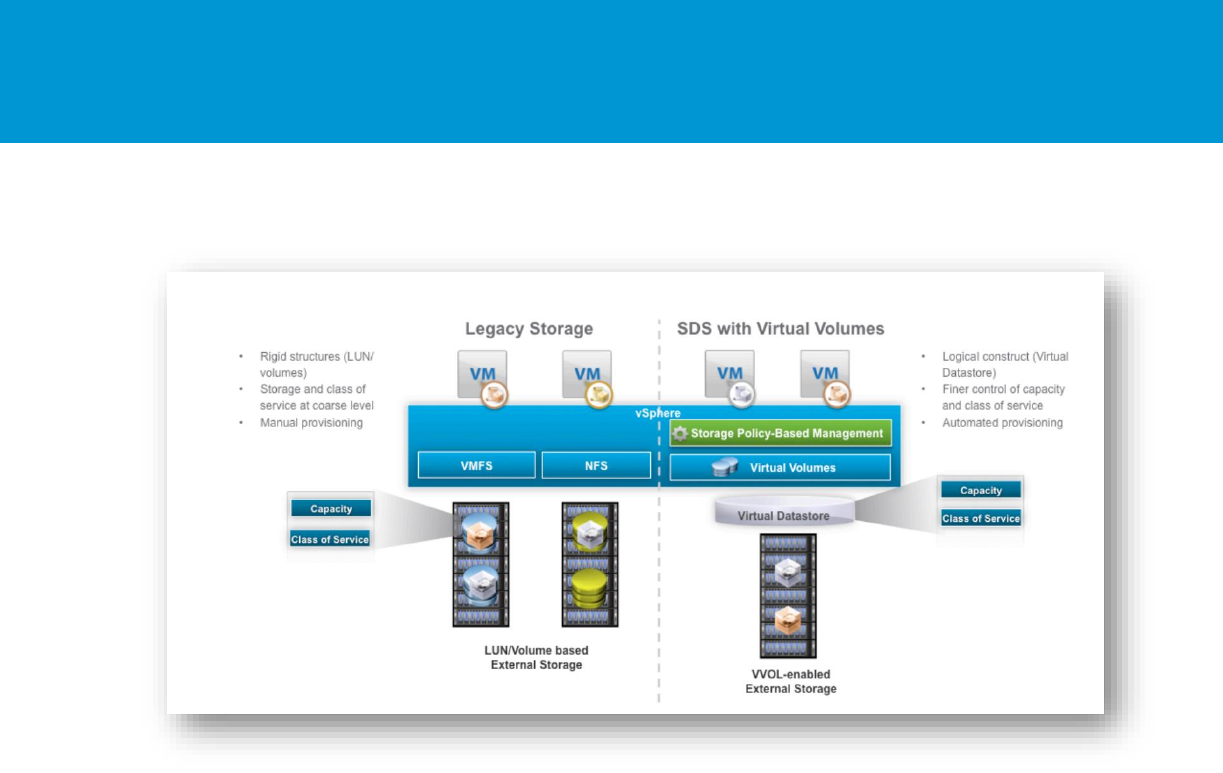

3.5.1.3. vSphere Virtual Volumes

vSphere Virtual Volumes implements the core tenants of the VMware software-defined storage vision to

enable a fundamentally more efficient operational model for external storage in virtualized environments

centering it on the application instead of the physical infrastructure. vSphere Virtual Volumes enables

application-specific requirements to drive storage provisioning decisions while leveraging the rich set of

capabilities provided by existing storage arrays. Some of the primary benefits delivered by vSphere

Virtual Volumes are focused on operational efficiencies and flexible consumption models.

vSphere Virtual Volumes is a new VM disk management and integration framework that exposes virtual

disks as primary units of data management for storage arrays. This new framework enables array-based

operations at the virtual disk level that can be precisely aligned to application boundaries eliminating the

use of LUNs and VMFS datastores. vSphere Virtual Volumes is composed of these key implementations:

Flexible consumption at the logical level – vSphere Virtual Volumes virtualizes SAN and NAS devices

by abstracting physical hardware resources into logical pools of capacity (represented as virtual

datastore in vSphere) that can be more flexibly consumed and configured to span a portion of one or

several storage arrays.

Finer control at the VM level – vSphere Virtual Volumes defines a new virtual disk container (the

virtual volume) that is independent of the underlying physical storage representation (LUN, file

system, object, and so on.). In other terms, with vSphere Virtual Volumes, the virtual disk becomes

the primary unit of data management at the array level. This turns the virtual datastore into a VM-

centric pool of capacity. It becomes possible to execute storage operations with VM granularity and to

provision native array-based data services, such as compression, snapshots, de-duplication,

encryption, and so on to individual VMs. This allows admins to provide the correct storage service

levels to each individual VM.

Efficient operations through automation – SPBM allows capturing storage service levels requirements

(capacity, performance, availability, and so on) in the form of logical templates (policies) to which VMs

are associated. SPBM automates VM placement by identifying available datastores that meet policy

requirements, and coupled with vSphere Virtual Volumes, it dynamically instantiates necessary data

services. Through policy enforcement, SPBM also automates service-level monitoring and

compliance throughout the lifecycle of the VM.

B E S T P R A C T I C E S G U I D E / P A G E 28 O F 53

Architecting Microsoft SQL Server on VMware vSphere

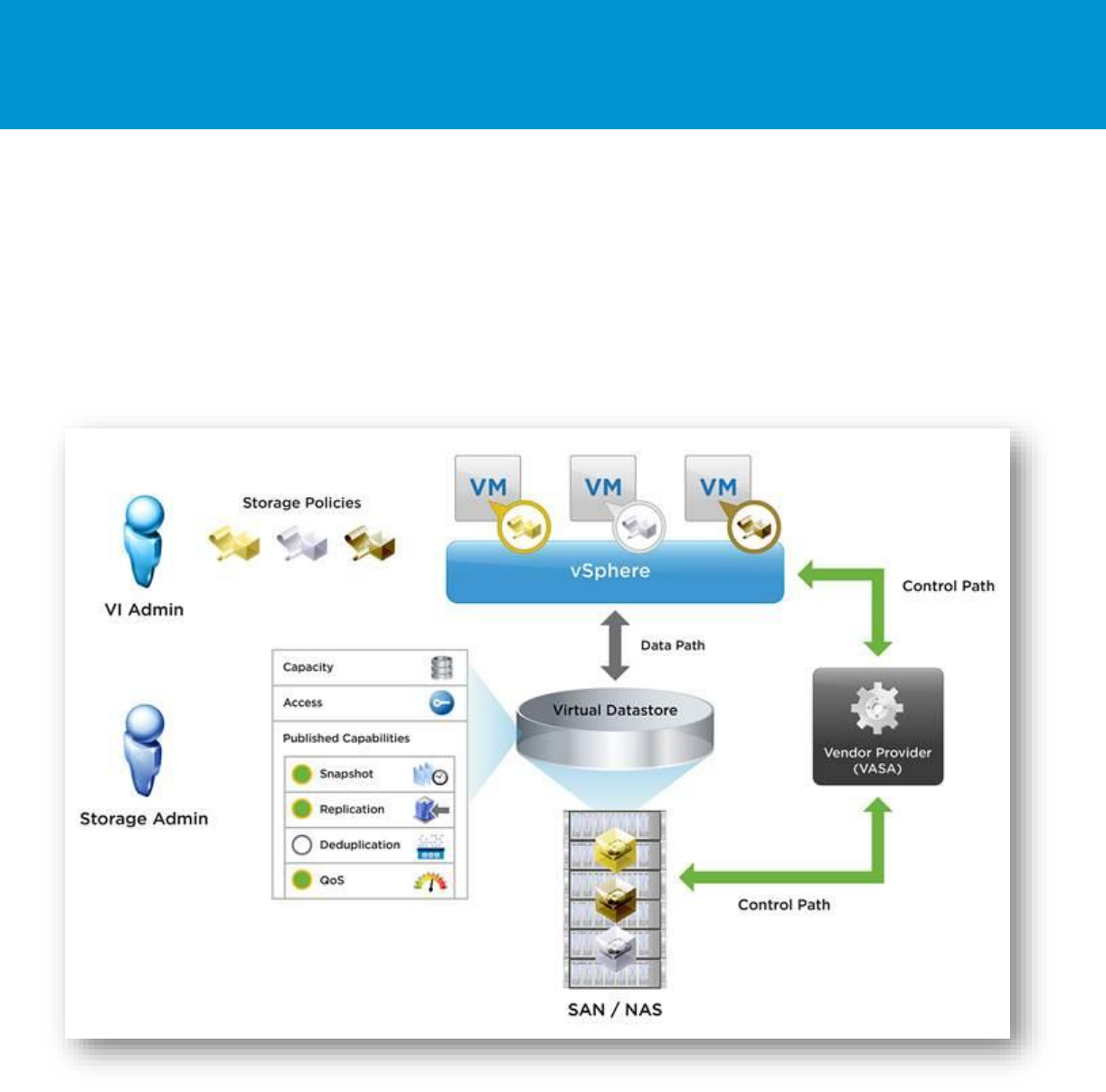

Figure 15. vSphere Virtual Volumes

The goal of vSphere Virtual Volumes is to provide a simpler operational model for managing VMs in

external storage while leveraging the rich set of capabilities available in storage arrays.

For more information about virtual volumes, see the What’s New: vSphere Virtual Volumes white paper at

https://www.vmware.com/files/pdf/products/virtualvolumes/VMware-Whats-New-vSphere-Virtual-

Volumes.pdf.

vSphere Virtual Volumes capabilities help with many of the challenges that large databases are facing:

Business critical virtualized databases need to meet strict SLAs for performance, and storage is

usually the slowest component compared to RAM and CPU and even network.

Database size is growing, while at the same time there is an increasing need to reduce backup

windows and the impact on system performance.

There is a regular need to clone and refresh databases from production to QA and other

environments. The size of the modern databases makes it harder to clone and refresh data from

production to other environments

Databases of different levels of criticality need different storage performance characteristics and

capabilities.

It is a challenge to back up multi-terabyte databases due to the restricted backup windows and the data

churn which itself can be quite large. It is not feasible to make full backups of these multi-terabyte

backups in the allotted backup windows.

Backup solutions, such as native SQL Server backup, provide a fine level granularity for database

backups but they are not always the fastest.

A VM snapshot containing the SQL Server backup file is ideal for solving this issue, but as indicated in

the Knowledge Base article, A snapshot removal can stop a virtual machine for a long time (1002836) at

B E S T P R A C T I C E S G U I D E / P A G E 29 O F 53

Architecting Microsoft SQL Server on VMware vSphere

http://kb.vmware.com/kb/1002836 , the brief stun moment of the VM can potentially cause performance

issues.

Storage based snapshots would be the fastest, but unfortunately storage snapshots are taken at the

datastore and LUN levels and not at the VM level. Therefore, there is no VMDK level granularity with

traditional storage level snapshots.

vSphere Virtual Volumes is an ideal solution that combines snapshot capabilities at the storage level with

the granularity of a VM level snapshot.

Figure 16. vSphere Virtual Volumes High Level Architecture

With vSphere Virtual Volumes, you can also set up different storage policies for different VMs. These

policies instantiate themselves on the physical storage system, enabling VM level granularity for

performance and other data services.

When virtualizing SQL Server on a SAN using vSphere Virtual Volumes as the underlying technology, the

best practices and guidelines remain the same as when using a VMFS datastore.

B E S T P R A C T I C E S G U I D E / P A G E 30 O F 53

Architecting Microsoft SQL Server on VMware vSphere

Make sure that the physical storage on which the VM’s virtual disks reside can accommodate the

requirements of the SQL Server implementation with regard to RAID, I/O, latency, queue depth, and so

on, as detailed in the storage best practices in this document.

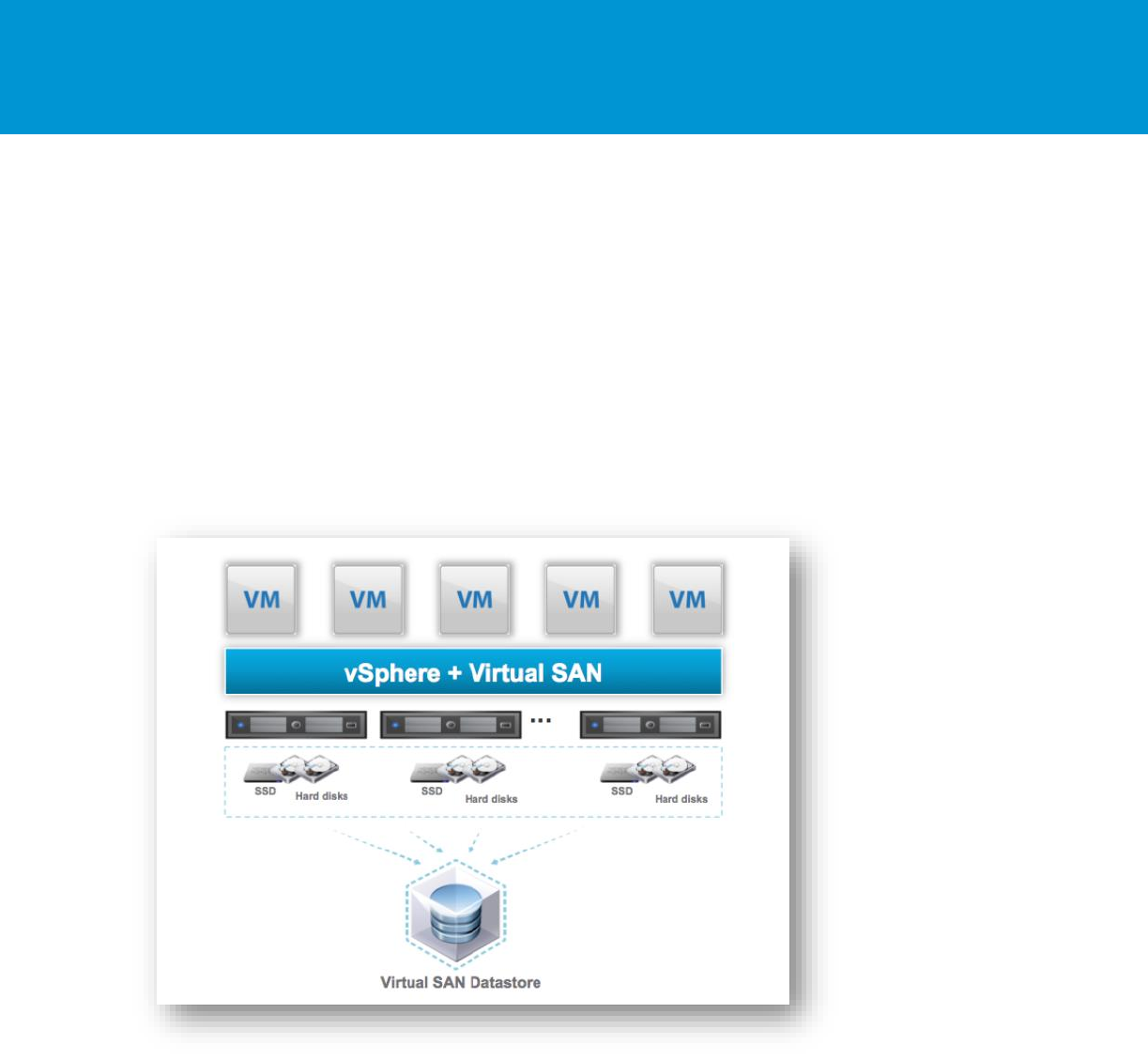

3.5.1.4. vSAN

vSAN is the VMware software-defined storage solution for hyper-converged infrastructure, a software-

driven architecture that delivers tightly integrated computing, networking, and shared storage from x86

servers. vSAN delivers high performance, highly resilient shared storage. vSAN provides enterprise-class

storage services for virtualized production environments along with predictable scalability and all-flash

performance at a fraction of the price of traditional, purpose-built storage arrays. Like vSphere, vSAN

provides users the flexibility and control to choose from a wide range of hardware options and easily

deploy and manage them for a variety of IT workloads and use cases.

Figure 17. VMware vSAN

vSAN can be configured as a hybrid or an all-flash storage. In a hybrid disk architecture, vSAN hybrid

leverages flash-based devices for performance and magnetic disks for capacity. In an all-flash vSAN

architecture, vSAN can use flash-based devices (PCIe SSD or SAS/SATA SSD) for both the write buffer

and persistent storage. Read cache is not available nor required in an all-flash architecture. vSAN is a

distributed object storage system that leverages the SPBM feature to deliver centrally managed,

application-centric storage services and capabilities. Administrators can specify storage attributes, such

as capacity, performance, and availability as a policy on a per-VMDK level. The policies dynamically self-

tune and load balance the system so that each VM has the appropriate level of resources.

vSAN 6.1 introduced the stretched cluster feature. vSAN stretched clusters provide customers with the

ability to deploy a single vSAN cluster across multiple data centers. vSAN stretched cluster is a specific

configuration implemented in environments where disaster or downtime avoidance is a key requirement.

vSAN stretched cluster builds on the foundation of fault domains. The fault domain feature introduced

B E S T P R A C T I C E S G U I D E / P A G E 31 O F 53

Architecting Microsoft SQL Server on VMware vSphere

rack awareness in vSAN 6.0. The feature allows customers to group multiple hosts into failure zones

across multiple server racks to ensure that replicas of VM objects are not provisioned on to the same