Big Data And Social Science (Statistics In The Behavioral Sciences Series) Ian Foster, Rayid Ghani, Ron S. Jarmin

(Statistics%20in%20the%20social%20and%20behavioral%20sciences%20series)%20Ian%20Foster%2C%20Rayid%20Ghani%2C%20Ron%20S.%20Jarmin

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 377 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover

- Half Title

- Title

- Copyright

- Contents

- Preface

- Editors

- Contributors

- 1: Introduction

- I: Capture and Curation

- 2: Working with Web Data and APIs

- 2.1: Introduction

- 2.2: Scraping information from the web

- 2.3: New data in the research enterprise

- 2.4: A functional view

- 2.5: Programming against an API

- 2.6: Using the ORCID API via a wrapper

- 2.7: Quality, scope, and management

- 2.8: Integrating data from multiple sources

- 2.9: Working with the graph of relationships

- 2.10: Bringing it together: Tracking pathways to impact

- 2.11: Summary

- 2.12: Resources

- 2.13: Acknowledgements and copyright

- 3: Record Linkage

- 4: Databases

- 5: Programming with Big Data

- 2: Working with Web Data and APIs

- II: Modeling and Analysis

- 6: Machine Learning

- 6.1: Introduction

- 6.2: What is machine learning?

- 6.3: The machine learning process

- 6.4: Problem formulation: Mapping a problem to machine learning methods

- 6.5: Methods

- 6.6: Evaluation

- 6.7: Practical tips

- 6.8: How can social scientists benefit from machine learning?

- 6.9: Advanced topics

- 6.10: Summary

- 6.11: Resources

- 7: Text Analysis

- 8: Networks: The Basics

- 6: Machine Learning

- III: Inference and Ethics

- 9: Information Visualization

- 10: Errors and Inference

- 10.1: Introduction

- 10.2: The total error paradigm

- 10.3: Illustrations of errors in big data

- 10.4: Errors in big data analytics

- 10.5: Some methods for mitigating, detecting, and compensating for errors

- 10.6: Summary

- 10.7: Resources

- 11: Privacy and Confidentiality

- 12: Workbooks

- Bibliography�������������������

- Index������������

BIG DATA AND

SOCIAL SCIENCE

A Practical Guide to Methods and Tools

Statistics in the Social and Behavioral Sciences Series

Aims and scope

Large and complex datasets are becoming prevalent in the social and behavioral

sciences and statistical methods are crucial for the analysis and interpretation of such

data. This series aims to capture new developments in statistical methodology with

particular relevance to applications in the social and behavioral sciences. It seeks to

promote appropriate use of statistical, econometric and psychometric methods in

these applied sciences by publishing a broad range of reference works, textbooks and

handbooks.

The scope of the series is wide, including applications of statistical methodology in

sociology, psychology, economics, education, marketing research, political science,

criminology, public policy, demography, survey methodology and ofcial statistics. The

titles included in the series are designed to appeal to applied statisticians, as well as

students, researchers and practitioners from the above disciplines. The inclusion of real

examples and case studies is therefore essential.

Jeff Gill

Washington University, USA

Wim J. van der Linden

Pacic Metrics, USA

Steven Heeringa

University of Michigan, USA

J. Scott Long

Indiana University, USA

Series Editors

Chapman & Hall/CRC

Tom Snijders

Oxford University, UK

University of Groningen, NL

Published Titles

Analyzing Spatial Models of Choice and Judgment with R

David A. Armstrong II, Ryan Bakker, Royce Carroll, Christopher Hare, Keith T. Poole, and Howard Rosenthal

Analysis of Multivariate Social Science Data, Second Edition

David J. Bartholomew, Fiona Steele, Irini Moustaki, and Jane I. Galbraith

Latent Markov Models for Longitudinal Data

Francesco Bartolucci, Alessio Farcomeni, and Fulvia Pennoni

Statistical Test Theory for the Behavioral Sciences

Dato N. M. de Gruijter and Leo J. Th. van der Kamp

Multivariable Modeling and Multivariate Analysis for the Behavioral Sciences

Brian S. Everitt

Multilevel Modeling Using R

W. Holmes Finch, Jocelyn E. Bolin, and Ken Kelley

Big Data and Social Science: A Practical Guide to Methods and Tools

Ian Foster, Rayid Ghani, Ron S. Jarmin, Frauke Kreuter, and Julia Lane

Ordered Regression Models: Parallel, Partial, and Non-Parallel Alternatives

Andrew S. Fullerton and Jun Xu

Bayesian Methods: A Social and Behavioral Sciences Approach, Third Edition

Jeff Gill

Multiple Correspondence Analysis and Related Methods

Michael Greenacre and Jorg Blasius

Applied Survey Data Analysis

Steven G. Heeringa, Brady T. West, and Patricia A. Berglund

Informative Hypotheses: Theory and Practice for Behavioral and Social Scientists

Herbert Hoijtink

Generalized Structured Component Analysis: A Component-Based Approach to Structural Equation Modeling

Heungsun Hwang and Yoshio Takane

Bayesian Psychometric Modeling

Roy Levy and Robert J. Mislevy

Statistical Studies of Income, Poverty and Inequality in Europe: Computing and Graphics in R Using EU-SILC

Nicholas T. Longford

Foundations of Factor Analysis, Second Edition

Stanley A. Mulaik

Linear Causal Modeling with Structural Equations

Stanley A. Mulaik

Age–Period–Cohort Models: Approaches and Analyses with Aggregate Data

Robert M. O’Brien

Handbook of International Large-Scale Assessment: Background, Technical Issues, and Methods of Data

Analysis

Leslie Rutkowski, Matthias von Davier, and David Rutkowski

Generalized Linear Models for Categorical and Continuous Limited Dependent Variables

Michael Smithson and Edgar C. Merkle

Incomplete Categorical Data Design: Non-Randomized Response Techniques for Sensitive Questions in

Surveys

Guo-Liang Tian and Man-Lai Tang

Handbook of Item Response Theory, Volume 1: Models

Wim J. van der Linden

Handbook of Item Response Theory, Volume 2: Statistical Tools

Wim J. van der Linden

Handbook of Item Response Theory, Volume 3: Applications

Wim J. van der Linden

Computerized Multistage Testing: Theory and Applications

Duanli Yan, Alina A. von Davier, and Charles Lewis

Statistics in the Social and Behavioral Sciences Series

Chapman & Hall/CRC

BIG DATA AND

SOCIAL SCIENCE

A Practical Guide to Methods and Tools

Edited by

Ian Foster

University of Chicago

Argonne National Laboratory

Rayid Ghani

University of Chicago

Ron S. Jarmin

U.S. Census Bureau

Frauke Kreuter

University of Maryland

University of Manheim

Institute for Employment Research

Julia Lane

New York University

American Institutes for Research

CRC Press

Taylor & Francis Group

6000 Broken Sound Parkway NW, Suite 300

Boca Raton, FL 33487-2742

© 2017 by Taylor & Francis Group, LLC

CRC Press is an imprint of Taylor & Francis Group, an Informa business

No claim to original U.S. Government works

Printed on acid-free paper

Version Date: 20160414

International Standard Book Number-13: 978-1-4987-5140-7 (Hardback)

This book contains information obtained from authentic and highly regarded sources. Reasonable efforts have been made to publish reliable data and information, but

the author and publisher cannot assume responsibility for the validity of all materials or the consequences of their use. The authors and publishers have attempted to

trace the copyright holders of all material reproduced in this publication and apologize to copyright holders if permission to publish in this form has not been obtained.

If any copyright material has not been acknowledged please write and let us know so we may rectify in any future reprint.

Except as permitted under U.S. Copyright Law, no part of this book may be reprinted, reproduced, transmitted, or utilized in any form by any electronic, mechanical,

or other means, now known or hereafter invented, including photocopying, microfilming, and recording, or in any information storage or retrieval system, without

written permission from the publishers.

For permission to photocopy or use material electronically from this work, please access www.copyright.com (http://www.copyright.com/) or contact the Copyright

Clearance Center, Inc. (CCC), 222 Rosewood Drive, Danvers, MA 01923, 978-750-8400. CCC is a not-for-profit organization that provides licenses and registration for a

variety of users. For organizations that have been granted a photocopy license by the CCC, a separate system of payment has been arranged.

Trademark Notice: Product or corporate names may be trademarks or registered trademarks, and are used only for identification and explanation without intent to

infringe.

Library of Congress Cataloging‑in‑Publication Data

Names: Foster, Ian, 1959- editor.

Title: Big data and social science : a practical guide to methods and tools /

edited by Ian Foster, University of Chicago, Illinois, USA, Rayid Ghani,

University of Chicago, Illinois, USA, Ron S. Jarmin, U.S. Census Bureau,

USA, Frauke Kreuter, University of Maryland, USA, Julia Lane, New York

University, USA.

Description: Boca Raton, FL : CRC Press, [2017] | Series: Chapman & Hall/CRC

statistics in the social and behavioral sciences series | Includes

bibliographical references and index.

Identifiers: LCCN 2016010317 | ISBN 9781498751407 (alk. paper)

Subjects: LCSH: Social sciences--Data processing. | Social

sciences--Statistical methods. | Data mining. | Big data.

Classification: LCC H61.3 .B55 2017 | DDC 300.285/6312--dc23

LC record available at https://lccn.loc.gov/2016010317

Visit the Taylor & Francis Web site at

http://www.taylorandfrancis.com

and the CRC Press Web site at

http://www.crcpress.com

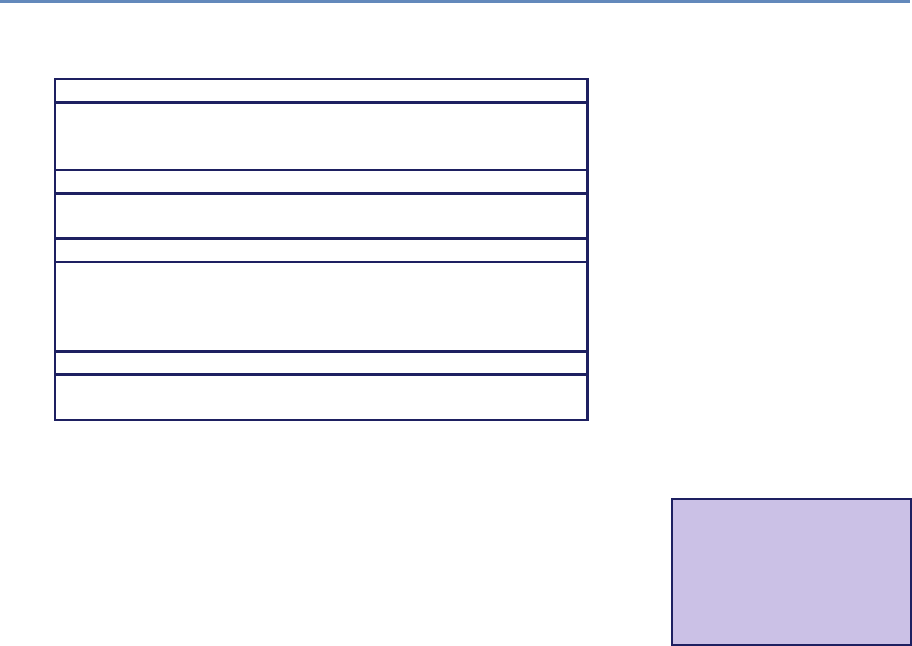

Contents

Preface xiii

Editors xv

Contributors xix

1 Introduction 1

1.1 Whythisbook?................................... 1

1.2 Defining big data and its value . . . . . . . . . . . . . . . . . . . . . . . . . . 3

1.3 Social science, inference, and big data . . . . . . . . . . . . . . . . . . . . . . 4

1.4 Social science, data quality, and big data . . . . . . . . . . . . . . . . . . . . 7

1.5 Newtoolsfornewdata............................... 9

1.6 Thebook’s“usecase” ............................... 10

1.7 The structure of the book . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

1.7.1 Part I: Capture and curation . . . . . . . . . . . . . . . . . . . . . . . 13

1.7.2 Part II: Modeling and analysis . . . . . . . . . . . . . . . . . . . . . . . 15

1.7.3 Part III: Inference and ethics . . . . . . . . . . . . . . . . . . . . . . . 16

1.8 Resources...................................... 17

I Capture and Curation 21

2 Working with Web Data and APIs 23

Cameron Neylon

2.1 Introduction .................................... 23

2.2 Scraping information from the web . . . . . . . . . . . . . . . . . . . . . . . . 24

2.2.1 Obtaining data from the HHMI website . . . . . . . . . . . . . . . . . . 24

2.2.2 Limitsofscraping ............................. 30

2.3 New data in the research enterprise . . . . . . . . . . . . . . . . . . . . . . . 31

2.4 Afunctionalview.................................. 37

2.4.1 Relevant APIs and resources . . . . . . . . . . . . . . . . . . . . . . . 38

2.4.2 RESTful APIs, returned data, and Python wrappers . . . . . . . . . . . 38

2.5 Programming against an API . . . . . . . . . . . . . . . . . . . . . . . . . . . 41

vii

viii Contents

2.6 Using the ORCID API via a wrapper . . . . . . . . . . . . . . . . . . . . . . . . 42

2.7 Quality, scope, and management . . . . . . . . . . . . . . . . . . . . . . . . . 44

2.8 Integrating data from multiple sources . . . . . . . . . . . . . . . . . . . . . . 46

2.8.1 TheLagottoAPI .............................. 46

2.8.2 Working with a corpus . . . . . . . . . . . . . . . . . . . . . . . . . . . 52

2.9 Working with the graph of relationships . . . . . . . . . . . . . . . . . . . . . 58

2.9.1 Citation links between articles . . . . . . . . . . . . . . . . . . . . . . 58

2.9.2 Categories, sources, and connections . . . . . . . . . . . . . . . . . . . 60

2.9.3 Data availability and completeness . . . . . . . . . . . . . . . . . . . . 61

2.9.4 The value of sparse dynamic data . . . . . . . . . . . . . . . . . . . . . 62

2.10 Bringing it together: Tracking pathways to impact . . . . . . . . . . . . . . . 65

2.10.1 Network analysis approaches . . . . . . . . . . . . . . . . . . . . . . . 66

2.10.2 Future prospects and new data sources . . . . . . . . . . . . . . . . . 66

2.11 Summary...................................... 67

2.12 Resources...................................... 69

2.13 Acknowledgements and copyright . . . . . . . . . . . . . . . . . . . . . . . . . 70

3 Record Linkage 71

Joshua Tokle and Stefan Bender

3.1 Motivation ..................................... 71

3.2 Introduction to record linkage . . . . . . . . . . . . . . . . . . . . . . . . . . . 72

3.3 Preprocessing data for record linkage . . . . . . . . . . . . . . . . . . . . . . . 76

3.4 Indexingandblocking............................... 78

3.5 Matching ...................................... 80

3.5.1 Rule-based approaches . . . . . . . . . . . . . . . . . . . . . . . . . . 82

3.5.2 Probabilistic record linkage . . . . . . . . . . . . . . . . . . . . . . . . 83

3.5.3 Machine learning approaches to linking . . . . . . . . . . . . . . . . . 85

3.5.4 Disambiguating networks . . . . . . . . . . . . . . . . . . . . . . . . . 88

3.6 Classification.................................... 88

3.6.1 Thresholds ................................. 89

3.6.2 One-to-onelinks.............................. 90

3.7 Record linkage and data protection . . . . . . . . . . . . . . . . . . . . . . . . 91

3.8 Summary...................................... 92

3.9 Resources...................................... 92

4 Databases 93

Ian Foster and Pascal Heus

4.1 Introduction .................................... 93

4.2 DBMS:Whenandwhy............................... 94

4.3 RelationalDBMSs ................................. 100

4.3.1 Structured Query Language (SQL) . . . . . . . . . . . . . . . . . . . . 102

4.3.2 Manipulating and querying data . . . . . . . . . . . . . . . . . . . . . 102

4.3.3 Schema design and definition . . . . . . . . . . . . . . . . . . . . . . . 105

Contents ix

4.3.4 Loadingdata ................................ 107

4.3.5 Transactions and crash recovery . . . . . . . . . . . . . . . . . . . . . 108

4.3.6 Database optimizations . . . . . . . . . . . . . . . . . . . . . . . . . . 109

4.3.7 Caveats and challenges . . . . . . . . . . . . . . . . . . . . . . . . . . 112

4.4 Linking DBMSs and other tools . . . . . . . . . . . . . . . . . . . . . . . . . . 113

4.5 NoSQLdatabases ................................. 116

4.5.1 Challenges of scale: The CAP theorem . . . . . . . . . . . . . . . . . . 116

4.5.2 NoSQL and key–value stores . . . . . . . . . . . . . . . . . . . . . . . 117

4.5.3 Other NoSQL databases . . . . . . . . . . . . . . . . . . . . . . . . . . 119

4.6 Spatialdatabases ................................. 120

4.7 Whichdatabasetouse? .............................. 122

4.7.1 RelationalDBMSs ............................. 122

4.7.2 NoSQLDBMSs............................... 123

4.8 Summary...................................... 123

4.9 Resources...................................... 124

5 Programming with Big Data 125

Huy Vo and Claudio Silva

5.1 Introduction .................................... 125

5.2 The MapReduce programming model . . . . . . . . . . . . . . . . . . . . . . . 127

5.3 Apache Hadoop MapReduce . . . . . . . . . . . . . . . . . . . . . . . . . . . . 129

5.3.1 The Hadoop Distributed File System . . . . . . . . . . . . . . . . . . . 130

5.3.2 Hadoop: Bringing compute to the data . . . . . . . . . . . . . . . . . . 131

5.3.3 Hardware provisioning . . . . . . . . . . . . . . . . . . . . . . . . . . . 134

5.3.4 Programming language support . . . . . . . . . . . . . . . . . . . . . . 136

5.3.5 Faulttolerance............................... 137

5.3.6 Limitations of Hadoop . . . . . . . . . . . . . . . . . . . . . . . . . . . 137

5.4 ApacheSpark.................................... 138

5.5 Summary...................................... 141

5.6 Resources...................................... 143

II Modeling and Analysis 145

6 Machine Learning 147

Rayid Ghani and Malte Schierholz

6.1 Introduction .................................... 147

6.2 What is machine learning? . . . . . . . . . . . . . . . . . . . . . . . . . . . . 148

6.3 The machine learning process . . . . . . . . . . . . . . . . . . . . . . . . . . . 150

6.4 Problem formulation: Mapping a problem to machine learning methods . . . . 151

6.5 Methods....................................... 153

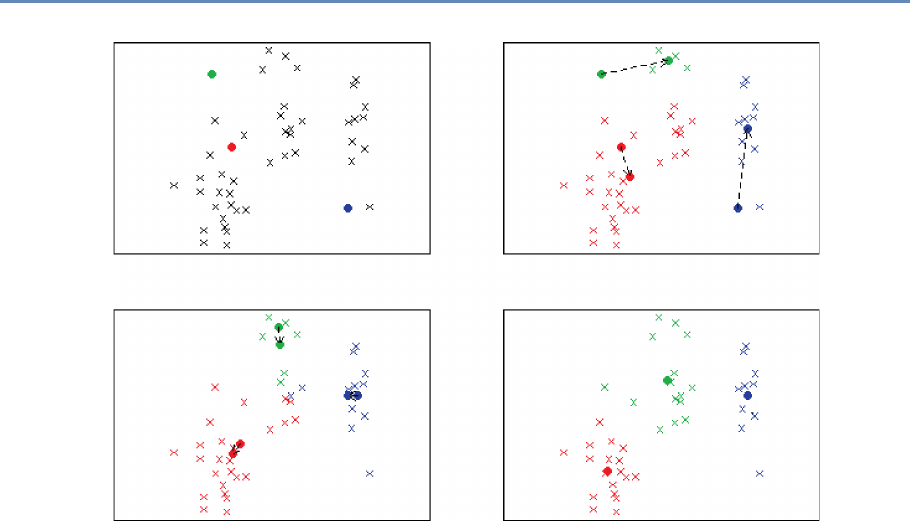

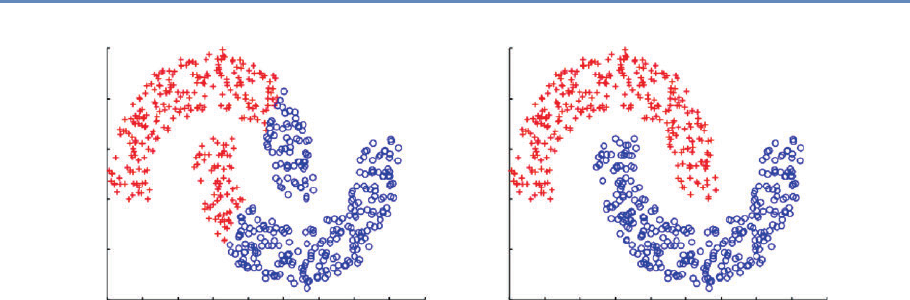

6.5.1 Unsupervised learning methods . . . . . . . . . . . . . . . . . . . . . . 153

6.5.2 Supervised learning . . . . . . . . . . . . . . . . . . . . . . . . . . . . 161

x Contents

6.6 Evaluation ..................................... 173

6.6.1 Methodology ................................ 173

6.6.2 Metrics ................................... 176

6.7 Practicaltips .................................... 180

6.7.1 Features .................................. 180

6.7.2 Machine learning pipeline . . . . . . . . . . . . . . . . . . . . . . . . . 181

6.7.3 Multiclass problems . . . . . . . . . . . . . . . . . . . . . . . . . . . . 181

6.7.4 Skewed or imbalanced classification problems . . . . . . . . . . . . . . 182

6.8 How can social scientists benefit from machine learning? . . . . . . . . . . . . 183

6.9 Advancedtopics .................................. 185

6.10 Summary...................................... 185

6.11 Resources...................................... 186

7 Text Analysis 187

Evgeny Klochikhin and Jordan Boyd-Graber

7.1 Understanding what people write . . . . . . . . . . . . . . . . . . . . . . . . . 187

7.2 Howtoanalyzetext ................................ 189

7.2.1 Processing text data . . . . . . . . . . . . . . . . . . . . . . . . . . . . 190

7.2.2 How much is a word worth? . . . . . . . . . . . . . . . . . . . . . . . . 192

7.3 Approaches and applications . . . . . . . . . . . . . . . . . . . . . . . . . . . 193

7.3.1 Topicmodeling............................... 193

7.3.1.1 Inferring topics from raw text . . . . . . . . . . . . . . . . . 194

7.3.1.2 Applications of topic models . . . . . . . . . . . . . . . . . . 197

7.3.2 Information retrieval and clustering . . . . . . . . . . . . . . . . . . . 198

7.3.3 Otherapproaches ............................. 205

7.4 Evaluation ..................................... 208

7.5 Textanalysistools ................................. 210

7.6 Summary...................................... 212

7.7 Resources...................................... 213

8 Networks: The Basics 215

Jason Owen-Smith

8.1 Introduction .................................... 215

8.2 Networkdata.................................... 218

8.2.1 Forms of network data . . . . . . . . . . . . . . . . . . . . . . . . . . . 218

8.2.2 Inducing one-mode networks from two-mode data . . . . . . . . . . . 220

8.3 Networkmeasures................................. 224

8.3.1 Reachability ................................ 224

8.3.2 Whole-network measures . . . . . . . . . . . . . . . . . . . . . . . . . 225

8.4 Comparing collaboration networks . . . . . . . . . . . . . . . . . . . . . . . . 234

8.5 Summary...................................... 238

8.6 Resources...................................... 239

Contents xi

III Inference and Ethics 241

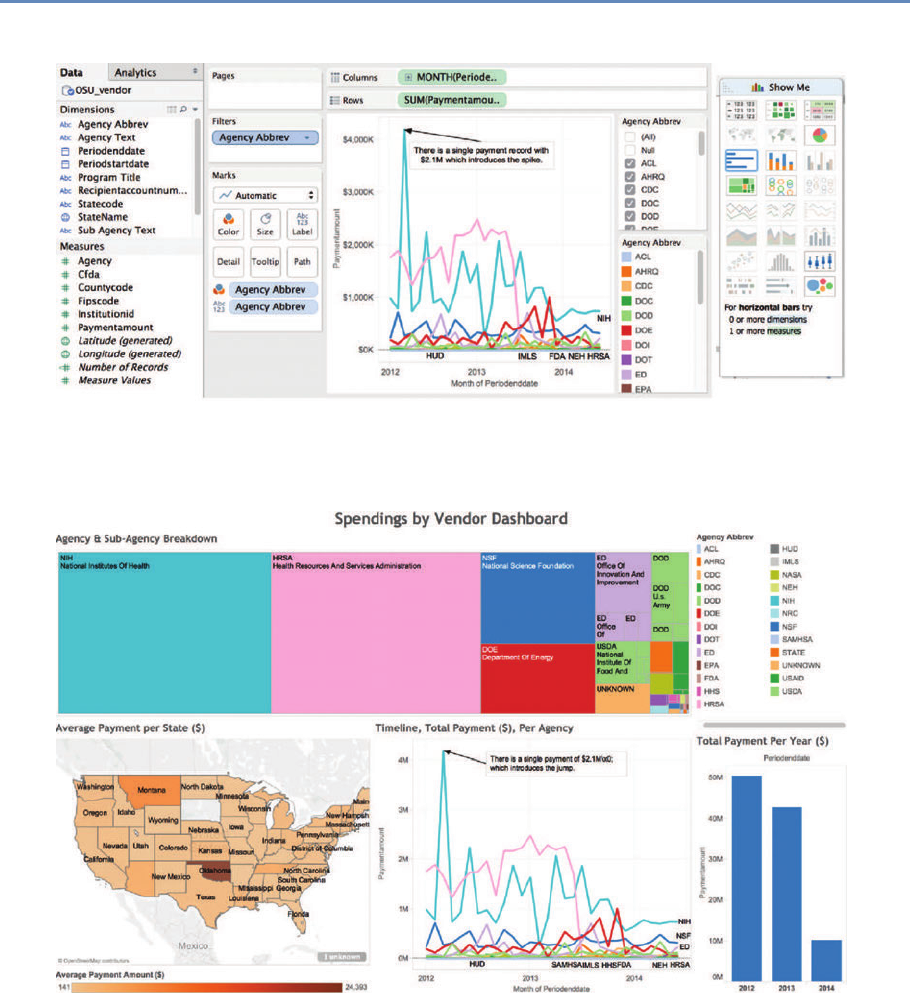

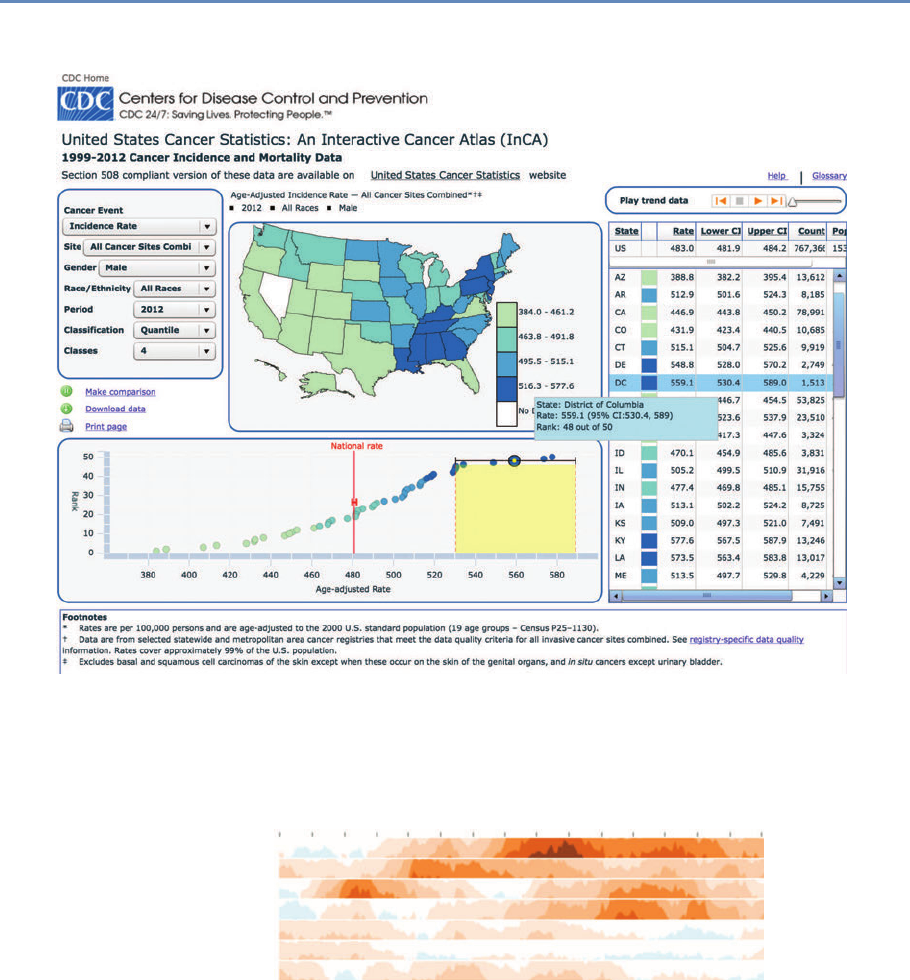

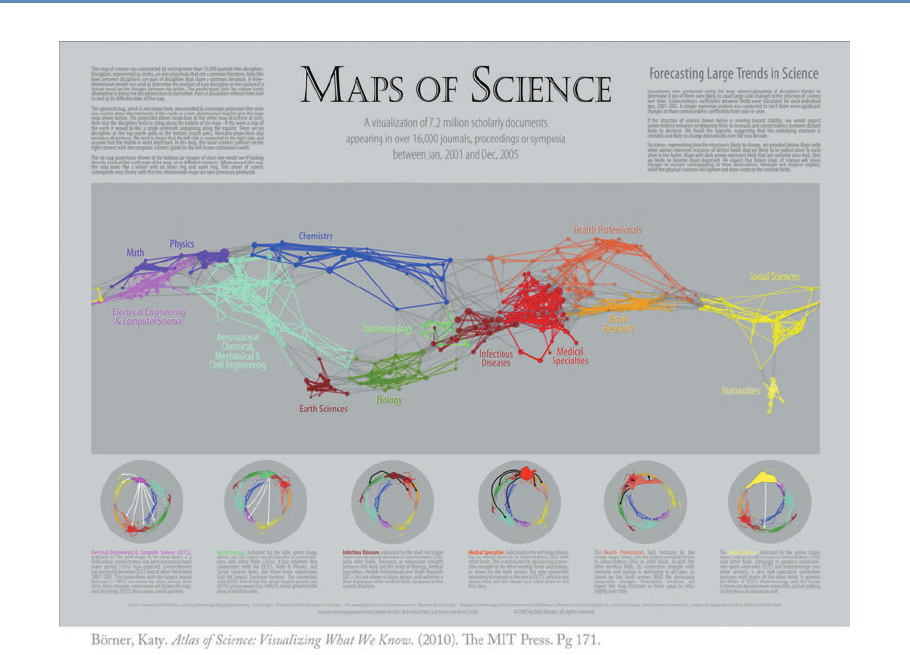

9 Information Visualization 243

M. Adil Yalçın and Catherine Plaisant

9.1 Introduction .................................... 243

9.2 Developing effective visualizations . . . . . . . . . . . . . . . . . . . . . . . . 244

9.3 A data-by-tasks taxonomy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 249

9.3.1 Multivariatedata.............................. 249

9.3.2 Spatialdata................................. 251

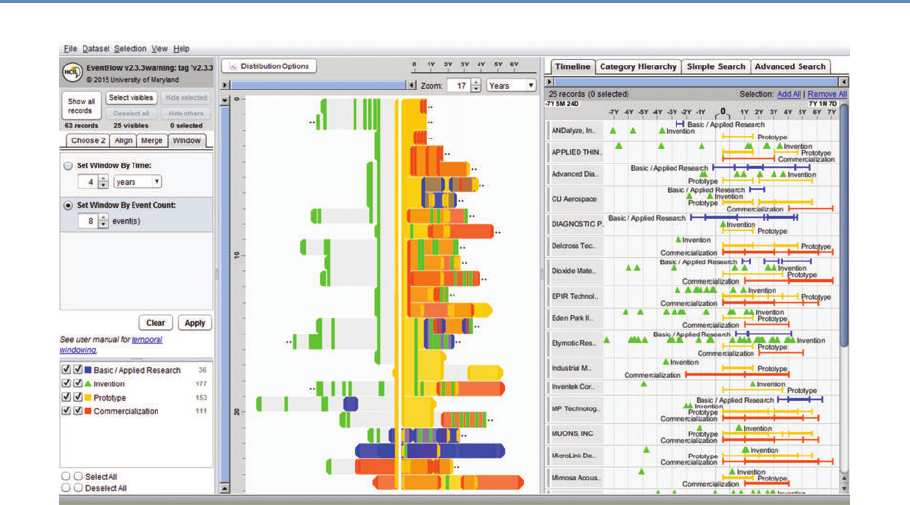

9.3.3 Temporaldata ............................... 252

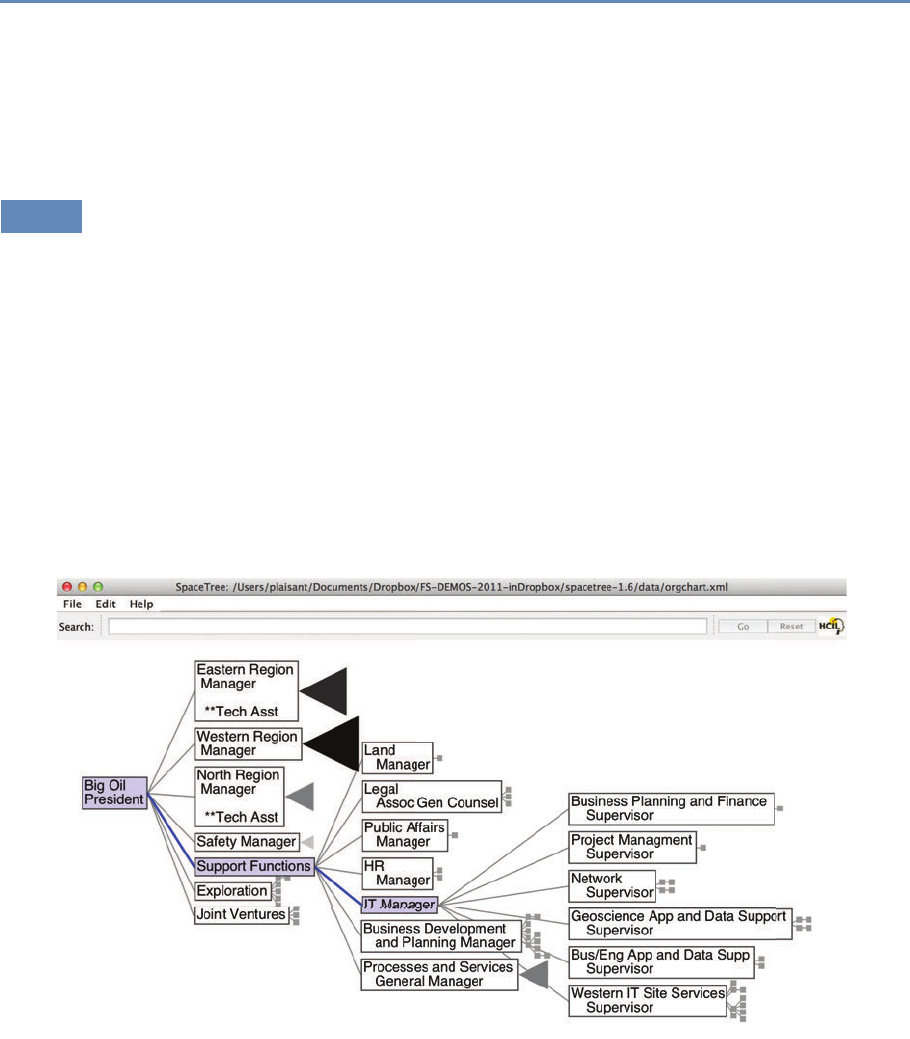

9.3.4 Hierarchicaldata.............................. 255

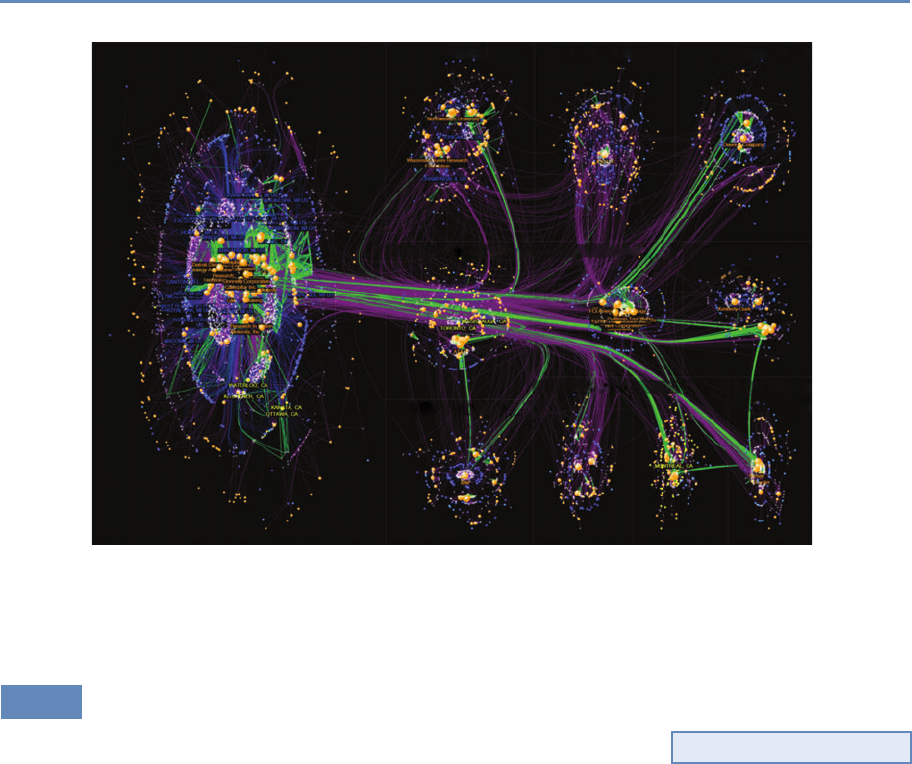

9.3.5 Networkdata................................ 257

9.3.6 Textdata .................................. 259

9.4 Challenges ..................................... 259

9.4.1 Scalability ................................. 260

9.4.2 Evaluation ................................. 261

9.4.3 Visualimpairment............................. 261

9.4.4 Visualliteracy ............................... 262

9.5 Summary...................................... 262

9.6 Resources...................................... 263

10 Errors and Inference 265

Paul P. Biemer

10.1 Introduction .................................... 265

10.2 The total error paradigm . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 266

10.2.1 The traditional model . . . . . . . . . . . . . . . . . . . . . . . . . . . 266

10.2.2 Extending the framework to big data . . . . . . . . . . . . . . . . . . . 273

10.3 Illustrations of errors in big data . . . . . . . . . . . . . . . . . . . . . . . . . 275

10.4 Errors in big data analytics . . . . . . . . . . . . . . . . . . . . . . . . . . . . 277

10.4.1 Errors resulting from volume, velocity, and variety, assuming perfect

veracity................................... 277

10.4.2 Errors resulting from lack of veracity . . . . . . . . . . . . . . . . . . . 279

10.4.2.1 Variable and correlated error . . . . . . . . . . . . . . . . . . 280

10.4.2.2 Models for categorical data . . . . . . . . . . . . . . . . . . . 282

10.4.2.3 Misclassification and rare classes . . . . . . . . . . . . . . . 283

10.4.2.4 Correlation analysis . . . . . . . . . . . . . . . . . . . . . . . 284

10.4.2.5 Regression analysis . . . . . . . . . . . . . . . . . . . . . . . 288

10.5 Some methods for mitigating, detecting, and compensating for errors . . . . . 290

10.6 Summary...................................... 295

10.7 Resources...................................... 296

xii Contents

11 Privacy and Confidentiality 299

Stefan Bender, Ron Jarmin, Frauke Kreuter, and Julia Lane

11.1 Introduction .................................... 299

11.2 Why is access important? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 303

11.3 Providingaccess .................................. 305

11.4 Thenewchallenges ................................ 306

11.5 Legal and ethical framework . . . . . . . . . . . . . . . . . . . . . . . . . . . . 308

11.6 Summary...................................... 310

11.7 Resources...................................... 311

12 Workbooks 313

Jonathan Scott Morgan, Christina Jones, and Ahmad Emad

12.1 Introduction .................................... 313

12.2 Environment .................................... 314

12.2.1 Running workbooks locally . . . . . . . . . . . . . . . . . . . . . . . . 314

12.2.2 Central workbook server . . . . . . . . . . . . . . . . . . . . . . . . . . 315

12.3 Workbookdetails.................................. 315

12.3.1 Social Media and APIs . . . . . . . . . . . . . . . . . . . . . . . . . . . 315

12.3.2Databasebasics .............................. 316

12.3.3DataLinkage................................ 316

12.3.4MachineLearning ............................. 317

12.3.5TextAnalysis................................ 317

12.3.6Networks .................................. 318

12.3.7Visualization ................................ 318

12.4 Resources...................................... 319

Bibliography 321

Index 349

Preface

The class on which this book is based was created in response to a

very real challenge: how to introduce new ideas and methodologies

about economic and social measurement into a workplace focused

on producing high-quality statistics. We are deeply grateful for the

inspiration and support of Census Bureau Director John Thompson

and Deputy Director Nancy Potok in designing and implementing

the class content and structure.

As with any book, there are many people to be thanked. We

are grateful to Christina Jones, Ahmad Emad, Josh Tokle from

the American Institutes for Research, and Jonathan Morgan from

Michigan State University, who, together with Alan Marco and Julie

Caruso from the US Patent and Trademark Office, Theresa Leslie

from the Census Bureau, Brigitte Raumann from the University of

Chicago, and Lisa Jaso from Summit Consulting, actually made the

class happen.

We are also grateful to the students of three “Big Data for Fed-

eral Statistics” classes in which we piloted this material, and to the

instructors and speakers beyond those who contributed as authors

to this edited volume—Dan Black, Nick Collier, Ophir Frieder, Lee

Giles, Bob Goerge, Laure Haak, Madian Khabsa, Jonathan Ozik,

Ben Shneiderman, and Abe Usher. The book would not exist with-

out them.

We thank Trent Buskirk, Davon Clarke, Chase Coleman, Ste-

phanie Eckman, Matt Gee, Laurel Haak, Jen Helsby, Madian Khabsa,

Ulrich Kohler, Charlotte Oslund, Rod Little, Arnaud Sahuguet, Tim

Savage, Severin Thaler, and Joe Walsh for their helpful comments

on drafts of this material.

We also owe a great debt to the copyeditor, Richard Leigh; the

project editor, Charlotte Byrnes; and the publisher, Rob Calver, for

their hard work and dedication.

xiii

Editors

Ian Foster is a Professor of Computer Science at the University of

Chicago and a Senior Scientist and Distinguished Fellow at Argonne

National Laboratory.

Ian has a long record of research contributions in high-perfor-

mance computing, distributed systems, and data-driven discovery.

He has also led US and international projects that have produced

widely used software systems and scientific computing infrastruc-

tures. He has published hundreds of scientific papers and six books

on these and other topics. Ian is an elected fellow of the Amer-

ican Association for the Advancement of Science, the Association

for Computing Machinery, and the British Computer Society. His

awards include the British Computer Society’s Lovelace Medal and

the IEEE Tsutomu Kanai award.

Rayid Ghani is the Director of the Center for Data Science and

Public Policy and a Senior Fellow at the Harris School of Public

Policy and the Computation Institute at the University of Chicago.

Rayid is a reformed computer scientist and wannabe social scientist,

but mostly just wants to increase the use of data-driven approaches

in solving large public policy and social challenges. He is also pas-

sionate about teaching practical data science and started the Eric

and Wendy Schmidt Data Science for Social Good Fellowship at the

University of Chicago that trains computer scientists, statisticians,

and social scientists from around the world to work on data science

problems with social impact.

Before joining the University of Chicago, Rayid was the Chief

Scientist of the Obama 2012 Election Campaign, where he focused

on data, analytics, and technology to target and influence voters,

donors, and volunteers. Previously, he was a Research Scientist

and led the Machine Learning group at Accenture Labs. Rayid did

his graduate work in machine learning at Carnegie Mellon Univer-

sity and is actively involved in organizing data science related con-

xv

xvi Editors

ferences and workshops. In his ample free time, Rayid works with

non-profits and government agencies to help them with their data,

analytics, and digital efforts and strategy.

Ron S. Jarmin is the Assistant Director for Research and Method-

ology at the US Census Bureau. He formerly was the Bureau’s Chief

Economist and Chief of the Center for Economic Studies and a Re-

search Economist. He holds a PhD in economics from the University

of Oregon and has published papers in the areas of industrial or-

ganization, business dynamics, entrepreneurship, technology and

firm performance, urban economics, data access, and statistical

disclosure avoidance. He oversees a broad research program in

statistics, survey methodology, and economics to improve economic

and social measurement within the federal statistical system.

Frauke Kreuter is a Professor in the Joint Program in Survey Meth-

odology at the University of Maryland, Professor of Methods and

Statistics at the University of Mannheim, and head of the statistical

methods group at the German Institute for Employment Research

in Nuremberg. Previously she held positions in the Department of

Statistics at the University of California Los Angeles (UCLA), and

the Department of Statistics at the Ludwig-Maximillian’s University

of Munich. Frauke serves on several advisory boards for National

Statistical Institutes around the world and within the Federal Sta-

tistical System in the United States. She recently served as the

co-chair of the Big Data Task Force of the American Association

for Public Opinion Research. She is a Gertrude Cox Award win-

ner, recognizing statisticians in early- to mid-career who have made

significant breakthroughs in statistical practice, and an elected fel-

low of the American Statistical Association. Her textbooks on Data

Analysis Using Stata and Practical Tools for Designing and Weighting

Survey Samples are used at universities worldwide, including Har-

vard University, Johns Hopkins University, Massachusetts Insti-

tute of Technology, Princeton University, and the University College

London. Her Massive Open Online Course in Questionnaire De-

sign attracted over 70,000 learners within the first year. Recently

Frauke launched the international long-distance professional edu-

cation program sponsored by the German Federal Ministry of Edu-

cation and Research in Survey and Data Science.

Editors xvii

Julia Lane is a Professor at the New York University Wagner Grad-

uate School of Public Service and at the NYU Center for Urban Sci-

ence and Progress, and she is a NYU Provostial Fellow for Innovation

Analytics.

Julia has led many initiatives, including co-founding the UMET-

RICS and STAR METRICS programs at the National Science Foun-

dation. She conceptualized and established a data enclave at

NORC/University of Chicago. She also co-founded the creation and

permanent establishment of the Longitudinal Employer-Household

Dynamics Program at the US Census Bureau and the Linked Em-

ployer Employee Database at Statistics New Zealand. Julia has

published over 70 articles in leading journals, including Nature and

Science, and authored or edited ten books. She is an elected fellow

of the American Association for the Advancement of Science and a

fellow of the American Statistical Association.

Contributors

Stefan Bender

Deutsche Bundesbank

Frankfurt, Germany

Paul P. Biemer

RTI International

Raleigh, NC, USA

University of North Carolina

Chapel Hill, NC, USA

Jordan Boyd-Graber

University of Colorado

Boulder, CO, USA

Ahmad Emad

American Institutes for Research

Washington, DC, USA

Pascal Heus

Metadata Technology North America

Knoxville, TN, USA

Christina Jones

American Institutes for Research

Washington, DC, USA

Evgeny Klochikhin

American Institutes for Research

Washington, DC, USA

Jonathan Scott Morgan

Michigan State University

East Lansing, MI, USA

Cameron Neylon

Curtin University

Perth, Australia

Jason Owen-Smith

University of Michigan

Ann Arbor, MI, USA

Catherine Plaisant

University of Maryland

College Park, MD, USA

Malte Schierholz

University of Mannheim

Mannheim, Germany

Claudio Silva

New York University

New York, NY, USA

Joshua Tokle

Amazon

Seattle, WA, USA

Huy Vo

City University of New York

New York, NY, USA

M. Adil Yalçın

University of Maryland

College Park, MD, USA

xix

Introduction

Chapter 1

This section provides a brief overview of the goals and structure of

the book.

1.1 Why this book?

The world has changed for empirical social scientists. The new types

of “big data” have generated an entire new research field—that of

data science. That world is dominated by computer scientists who

have generated new ways of creating and collecting data, developed

new analytical and statistical techniques, and provided new ways of

visualizing and presenting information. These new sources of data

and techniques have the potential to transform the way applied

social science is done.

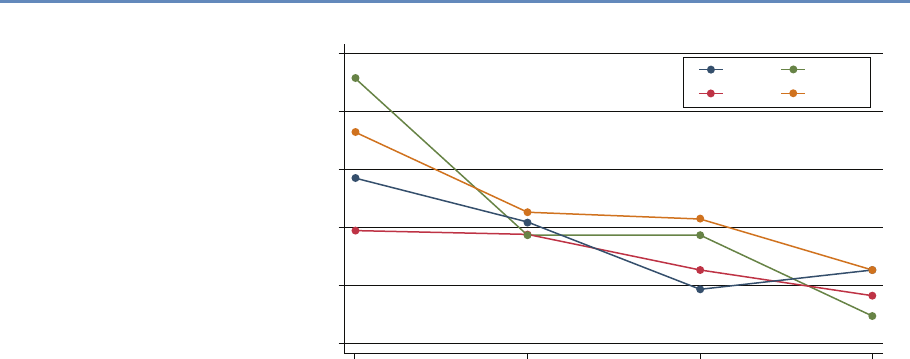

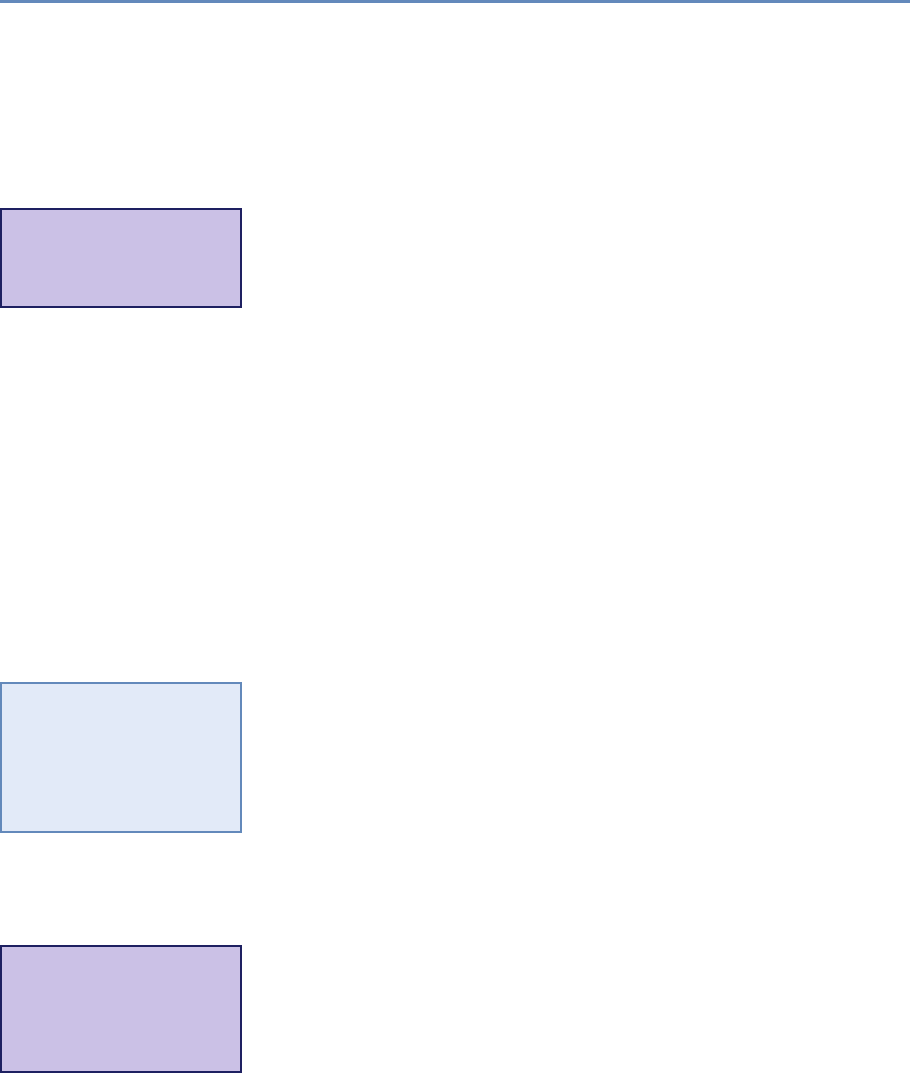

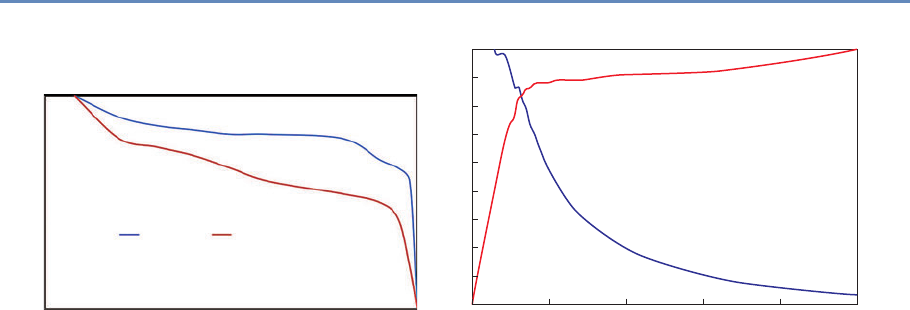

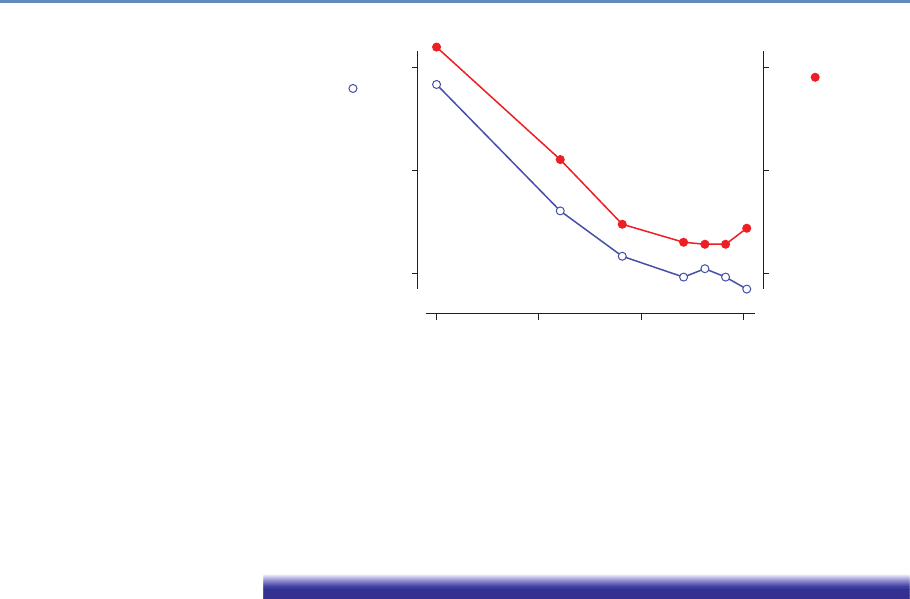

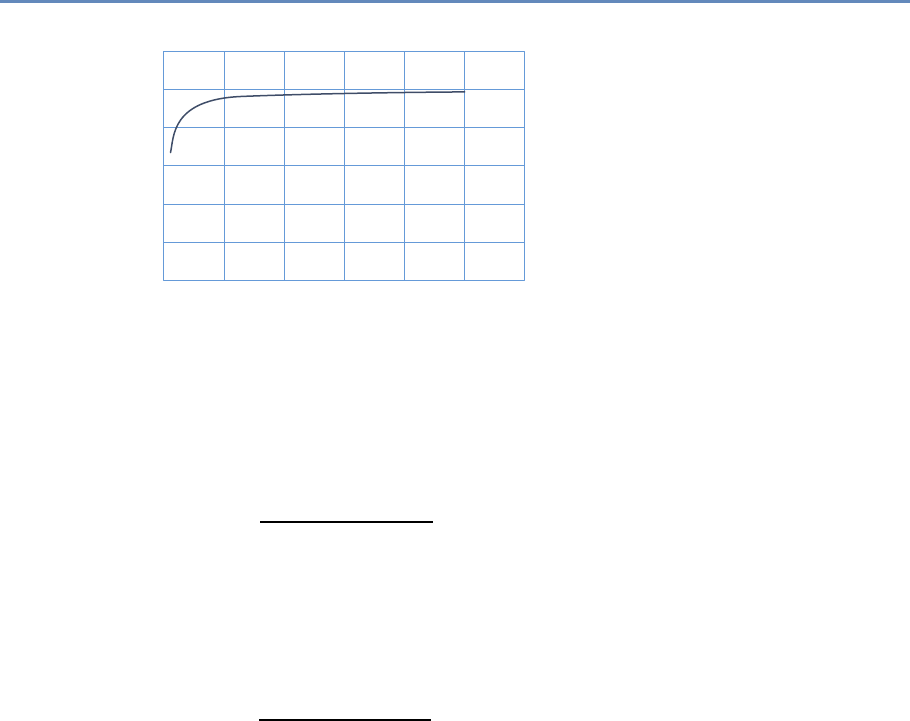

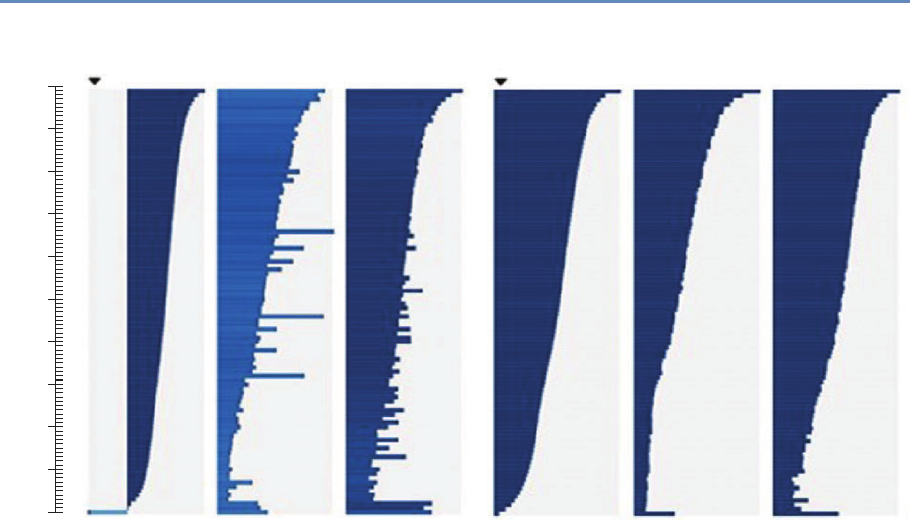

Research has certainly changed. Researchers draw on data that

are “found” rather than “made” by federal agencies; those publish-

ing in leading academic journals are much less likely today to draw

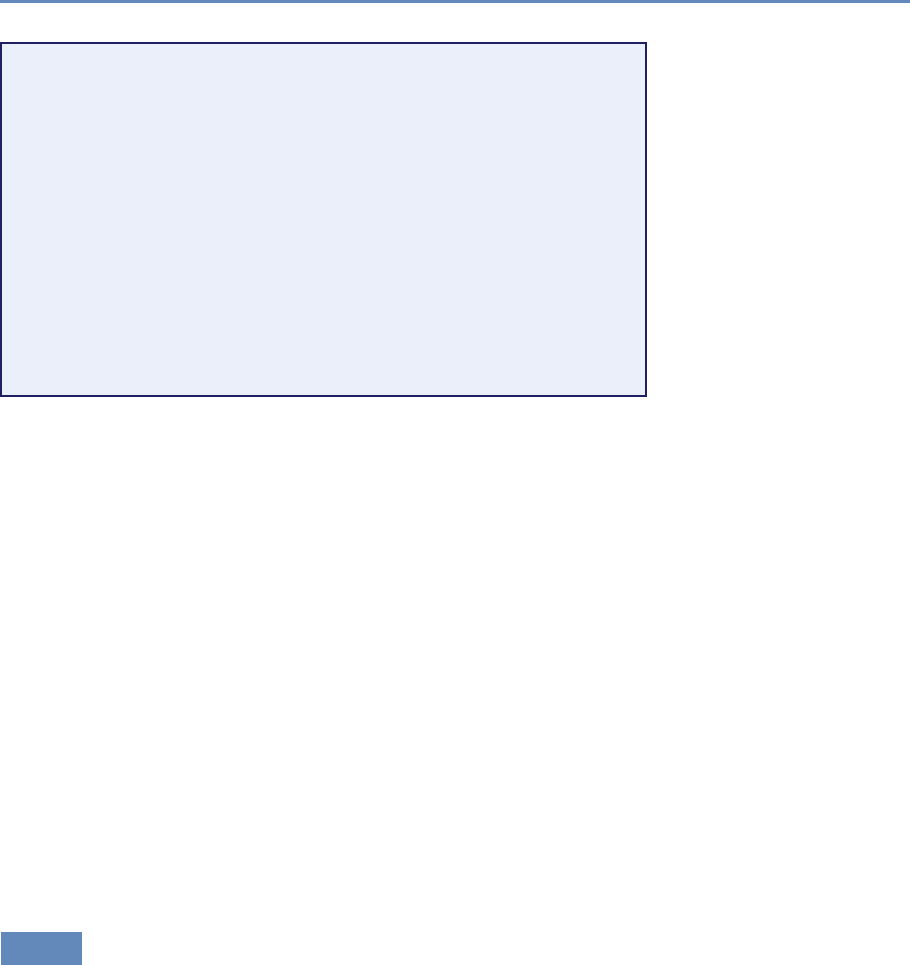

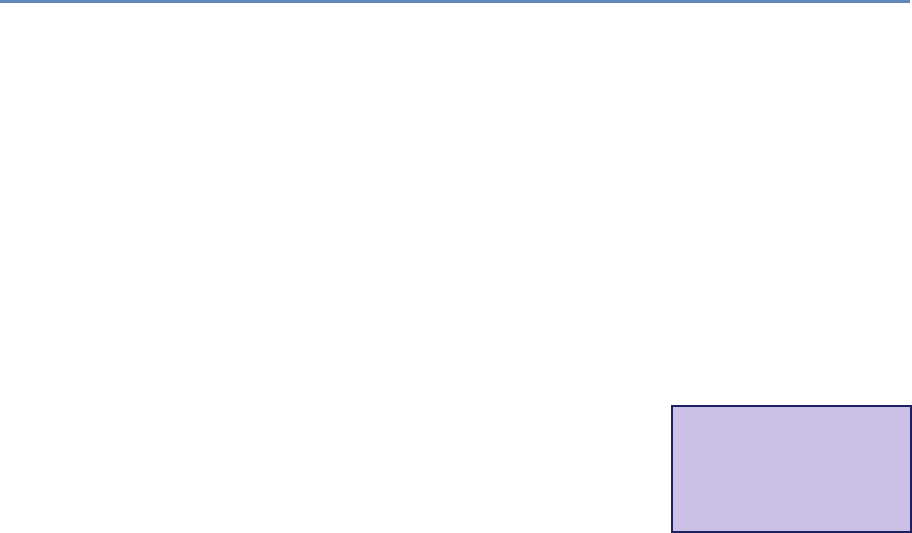

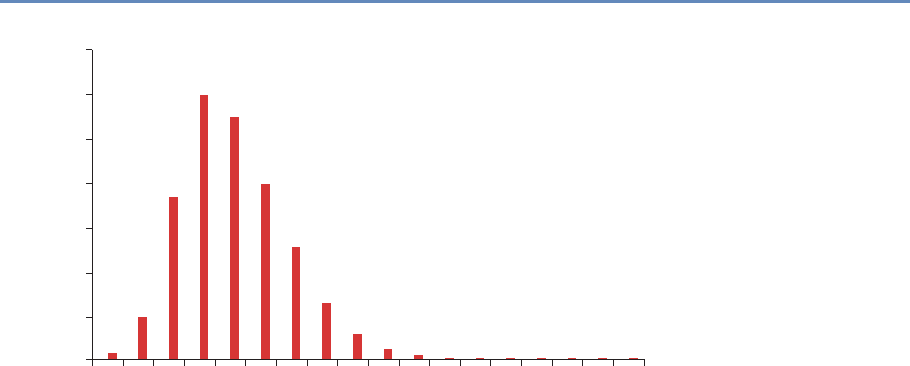

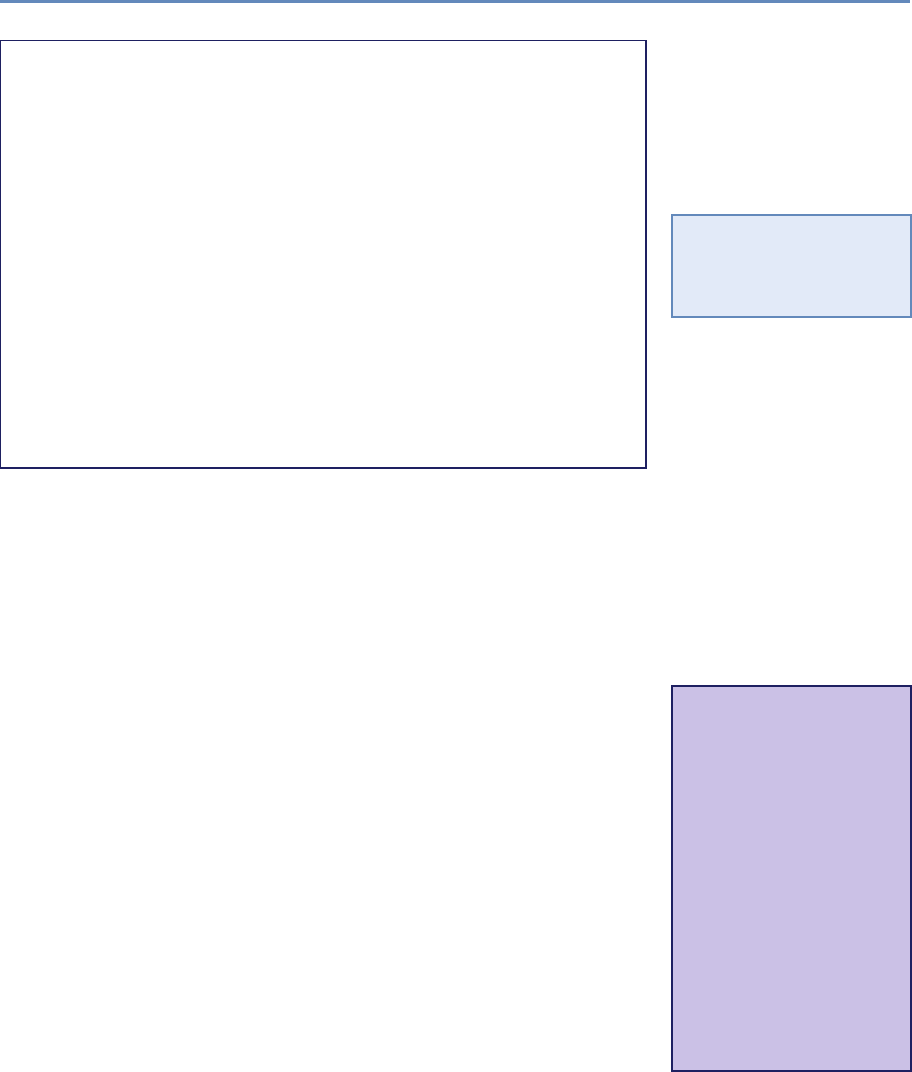

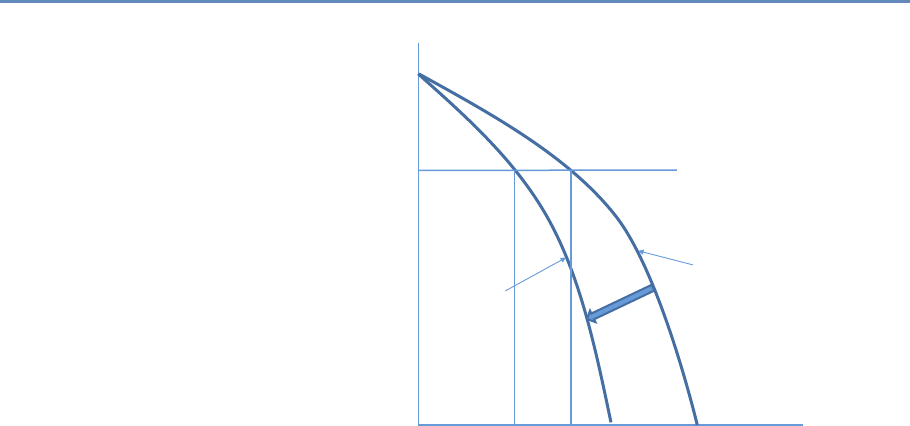

on preprocessed survey data (Figure 1.1).

The way in which data are used has also changed for both gov-

ernment agencies and businesses. Chief data officers are becoming

as common in federal and state governments as chief economists

were decades ago, and in cities like New York and Chicago, mayoral

offices of data analytics have the ability to provide rapid answers

to important policy questions [233]. But since federal, state, and

local agencies lack the capacity to do such analysis themselves [8],

they must make these data available either to consultants or to the

research community. Businesses are also learning that making ef-

fective use of their data assets can have an impact on their bottom

line [56].

And the jobs have changed. The new job title of “data scien-

tist” is highlighted in job advertisements on CareerBuilder.com and

Burning-glass.com—in the same category as statisticians, economists,

and other quantitative social scientists if starting salaries are useful

indicators.

1

2 1. Introduction

100

80

60

40

Micro-data Base Articles using Survey Data (%)

20

0

1980 1990

Note: “Pre-existing survey” data sets refer to micro surveys such as the CPS or

SIPP and do not include surveys designed by researchers for their study.

Sample excludes studies whose primary data source is from developing countries.

AER QJE

ECMA

JPE

2000

Year

2010

Figure 1.1. Use of pre-existing survey data in publications in leading journals,

1980–2010 [74]

The goal of this book is to provide social scientists with an un-

derstanding of the key elements of this new science, its value, and

the opportunities for doing better work. The goal is also to identify

the many ways in which the analytical toolkits possessed by social

scientists can be brought to bear to enhance the generalizability of

the work done by computer scientists.

We take a pragmatic approach, drawing on our experience of

working with data. Most social scientists set out to solve a real-

world social or economic problem: they frame the problem, identify

the data, do the analysis, and then draw inferences. At all points,

of course, the social scientist needs to consider the ethical ramifi-

cations of their work, particularly respecting privacy and confiden-

tiality. The book follows the same structure. We chose a particular

problem—the link between research investments and innovation—

because that is a major social science policy issue, and one in which

social scientists have been addressing using big data techniques.

While the example is specific and intended to show how abstract

concepts apply in practice, the approach is completely generaliz-

able. The web scraping, linkage, classification, and text analysis

methods on display here are canonical in nature. The inference

1.2. Defining big data and its value 3

and privacy and confidentiality issues are no different than in any

other study involving human subjects, and the communication of

results through visualization is similarly generalizable.

1.2 Defining big data and its value

There are almost as many definitions of big data as there are new

types of data. One approach is to define big data as anything too big ◮This topic is discussed in

more detail in Chapter 5.

to fit onto your computer. Another approach is to define it as data

with high volume, high velocity, and great variety. We choose the

description adopted by the American Association of Public Opinion

Research: “The term ‘Big Data’ is an imprecise description of a

rich and complicated set of characteristics, practices, techniques,

ethical issues, and outcomes all associated with data” [188].

The value of the new types of data for social science is quite

substantial. Personal data has been hailed as the “new oil” of the

twenty-first century, and the benefits to policy, society, and public

opinion research are undeniable [139]. Policymakers have found

that detailed data on human beings can be used to reduce crime,

improve health delivery, and manage cities better [205]. The scope

is broad indeed: one of this book’s editors has used such data to

not only help win political campaigns but also show its potential

for public policy. Society can gain as well—recent work shows data-

driven businesses were 5% more productive and 6% more profitable

than their competitors [56]. In short, the vision is that social sci-

ence researchers can potentially, by using data with high velocity,

variety, and volume, increase the scope of their data collection ef-

forts while at the same time reducing costs and respondent burden,

increasing timeliness, and increasing precision [265].

Example: New data enable new analyses

Spotshotter data, which have fairly detailed information for each gunfire incident,

such as the precise timestamp and the nearest address, as well as the type of

shot, can be used to improve crime data [63]; Twitter data can be used to improve

predictions around job loss, job gain, and job postings [17]; and eBay postings can

be used to estimate demand elasticities [104].

But most interestingly, the new data can change the way we

think about measuring and making inferences about behavior. For

4 1. Introduction

example, it enables the capture of information on the subject’s en-

tire environment—thus, for example, the effect of fast food caloric

labeling in health interventions [105]; the productivity of a cashier

if he is within eyesight of a highly productive cashier but not oth-

erwise [252]. So it offers the potential to understand the effects of

complex environmental inputs on human behavior. In addition, big

data, by its very nature, enables us to study the tails of a distribu-

tion in a way that is not possible with small data. Much of interest

in human behavior is driven by the tails of the distribution—health

care costs by small numbers of ill people [356], economic activity

and employment by a small number of firms [93,109]—and is impos-

sible to study with the small sample sizes available to researchers.

Instead we are still faced with the same challenges and respon-

sibilities as we were before in the survey and small data collection

environment. Indeed, social scientists have a great deal to offer to a

(data) world that is currently looking to computer scientists to pro-

vide answers. Two major areas to which social scientists can con-

tribute, based on decades of experience and work with end users,

are inference and attention to data quality.

1.3 Social science, inference, and big data

The goal of empirical social science is to make inferences about a

population from available data. That requirement exists regardless

of the data source—and is a guiding principle for this book. For

probability-based survey data, methodology has been developed to

overcome problems in the data generating process. A guiding prin-

ciple for survey methodologists is the total survey error framework,

and statistical methods for weighting, calibration, and other forms

of adjustment are commonly used to mitigate errors in the survey

process. Likewise for “broken” experimental data, techniques like

propensity score adjustment and principal stratification are widely

used to fix flaws in the data generating process. Two books provide

frameworks for survey quality [35, 143].

◮This topic is discussed in

more detail in Chapter 10.

Across the social sciences, including economics, public policy,

sociology, management, (parts of) psychology and the like, we can

identify three categories of analysis with three different inferential

goals: description, causation, and prediction.

Description The job of many social scientists is to provide descrip-

tive statements about the population of interest. These could be

univariate, bivariate, or even multivariate statements. Chapter 6

1.3. Social science, inference, and big data 5

on machine learning will cover methods that go beyond simple de-

scriptive statistics, known as unsupervised learning methods.

Descriptive statistics are usually created based on census data

or sample surveys to generate some summary statistics like a mean,

median, or a graphical distribution to describe the population of in-

terest. In the case of a census, the work ends right there. With

sample surveys the point estimates come with measures of uncer-

tainties (standard errors). The estimation of standard errors has

been worked out for most descriptive statistics and most common

survey designs, even complex ones that include multiple layers of

sampling and disproportional selection probabilities [154, 385].

Example: Descriptive statistics

The US Bureau of Labor Statistics surveys about 60,000 households a month and

from that survey is able to describe national employment and unemployment levels.

For example, in November 2015, total nonfarm payroll employment increased by

211,000 in November, and the unemployment rate was unchanged at 5.0%. Job

gains occurred in construction, professional and technical services, and health

care. Mining and information lost jobs [57].

Proper inference, even for purely descriptive purposes, from a

sample to the population rests usually on knowing that everyone

from the target population had the chance to be included in the

survey, and knowing the selection probability for each element in

the population. The latter does not necessarily need to be known

prior to sampling, but eventually a probability is assigned for each

case. Getting the selection probabilities right is particularly impor-

tant when reporting totals [243]. Unfortunately in practice, samples

that start out as probability samples can suffer from a high rate of

nonresponse. Because the survey designer cannot completely con-

trol which units respond, the set of units that ultimately respond

cannot be considered to be a probability sample [257]. Nevertheless,

starting with a probability sample provides some degree of comfort

that a sample will have limited coverage errors (nonzero probability

of being in the sample), and there are methods for dealing with a

variety of missing data problems [240].

Causation In many cases, social scientists wish to test hypotheses,

often originating in theory, about relationships between phenomena

of interest. Ideally such tests stem from data that allow causal infer-

6 1. Introduction

ence: typically randomized experiments or strong nonexperimental

study designs. When examining the effect of Xon Y, knowing how

cases were selected into the sample or data set is much less impor-

tant in the estimation of causal effects than for descriptive studies,

for example, population means. What is important is that all ele-

ments of the inferential population have a chance of being selected

for the treatment [179]. In the debate about probability and non-

probability surveys, this distinction is often overlooked. Medical

researchers have operated with unknown study selection mecha-

nisms for years: for example, randomized trials that enroll only

selected samples.

Example: New data and causal inference

One of the major risks with using big data without thinking about the data source

is the misallocation of resources. Overreliance on, say, Twitter data in targeting re-

sources after hurricanes can lead to the misallocation of resources towards young,

Internet-savvy people with cell phones, and away from elderly or impoverished

neighborhoods [340]. Of course, all data collection approaches have had similar

risks. Bad survey methodology led the Literary Digest to incorrectly call the 1936

election [353]. Inadequate understanding of coverage, incentive and quality issues,

together with the lack of a comparison group, has hampered the use of adminis-

trative records—famously in the case of using administrative records on crime to

make inference about the role of death penalty policy in crime reduction [95].

Of course, in practice it is difficult to ensure that results are

generalizable, and there is always a concern that the treatment

effect on the treated is different than the treatment effect in the

full population of interest [365]. Having unknown study selection

probabilities makes it even more difficult to estimate population

causal effects, but substantial progress is being made [99,261]. As

long as we are able to model the selection process, there is no reason

not to do causal inference from so-called nonprobability data.

Prediction Forecasting or prediction tasks are a little less common

among applied social science researchers as a whole, but are cer-

tainly an important element for users of official statistics—in partic-

ular, in the context of social and economic indicators—as generally

for decision-makers in government and business. Here, similar to

the causal inference setting, it is of utmost importance that we do

know the process that generated the data, and we can rule out any

unknown or unobserved systematic selection mechanism.

1.4. Social science, data quality, and big data 7

Example: Learning from the flu

“Five years ago [in 2009], a team of researchers from Google announced a remark-

able achievement in one of the world’s top scientific journals, Nature. Without

needing the results of a single medical check-up, they were nevertheless able to

track the spread of influenza across the US. What’s more, they could do it more

quickly than the Centers for Disease Control and Prevention (CDC). Google’s track-

ing had only a day’s delay, compared with the week or more it took for the CDC

to assemble a picture based on reports from doctors’ surgeries. Google was faster

because it was tracking the outbreak by finding a correlation between what people

searched for online and whether they had flu symptoms. . . .

“Four years after the original Nature paper was published, Nature News had

sad tidings to convey: the latest flu outbreak had claimed an unexpected victim:

Google Flu Trends. After reliably providing a swift and accurate account of flu

outbreaks for several winters, the theory-free, data-rich model had lost its nose for

where flu was going. Google’s model pointed to a severe outbreak but when the

slow-and-steady data from the CDC arrived, they showed that Google’s estimates

of the spread of flu-like illnesses were overstated by almost a factor of two.

“The problem was that Google did not know—could not begin to know—what

linked the search terms with the spread of flu. Google’s engineers weren’t trying to

figure out what caused what. They were merely finding statistical patterns in the

data. They cared about correlation rather than causation” [155].

1.4 Social science, data quality, and big data

Most data in the real world are noisy, inconsistent, and suffers from

missing values, regardless of its source. Even if data collection

is cheap, the costs of creating high-quality data from the source—

cleaning, curating, standardizing, and integrating—are substantial. ◮This topic is discussed in

more detail in Chapter 3.

Data quality can be characterized in multiple ways [76]:

•Accuracy: How accurate are the attribute values in the data?

•Completeness: Is the data complete?

•Consistency: How consistent are the values in and between

the database(s)?

•Timeliness: How timely is the data?

•Accessibility: Are all variables available for analysis?

8 1. Introduction

Social scientists have decades of experience in transforming

messy, noisy, and unstructured data into a well-defined, clearly

structured, and quality-tested data set. Preprocessing is a complex

and time-consuming process because it is “hands-on”—it requires

judgment and cannot be effectively automated. A typical workflow

comprises multiple steps from data definition to parsing and ends

with filtering. It is difficult to overstate the value of preprocessing

for any data analysis, but this is particularly true in big data. Data

need to be parsed, standardized, deduplicated, and normalized.

Parsing is a fundamental step taken regardless of the data source,

and refers to the decomposition of a complex variable into compo-

nents. For example, a freeform address field like “1234 E 56th St”

might be broken down into a street number “1234” and a street

name “E 56th St.” The street name could be broken down further

to extract the cardinal direction “E” and the designation “St.” An-

other example would be a combined full name field that takes the

form of a comma-separated last name, first name, and middle initial

as in “Miller, David A.” Splitting these identifiers into components

permits the creation of more refined variables that can be used in

the matching step.

In the simplest case, the distinct parts of a character field are

delimited. In the name field example, it would be easy to create the

separate fields “Miller” and “David A” by splitting the original field

at the comma. In more complex cases, special code will have to

be written to parse the field. Typical steps in a parsing procedure

include:

1. Splitting fields into tokens (words) on the basis of delimiters,

2. Standardizing tokens by lookup tables and substitution by a

standard form,

3. Categorizing tokens,

4. Identifying a pattern of anchors, tokens, and delimiters,

5. Calling subroutines according to the identified pattern, therein

mapping of tokens to the predefined components.

Standardization refers to the process of simplifying data by re-

placing variant representations of the same underlying observation

by a default value in order to improve the accuracy of field com-

parisons. For example, “First Street” and “1st St” are two ways of

writing the same street name, but a simple string comparison of

these values will return a poor result. By standardizing fields—and

1.5. New tools for new data 9

using the same standardization rules across files!—the number of

true matches that are wrongly classified as nonmatches (i.e., the

number of false nonmatches) can be reduced.

Some common examples of standardization are:

•Standardization of different spellings of frequently occurring

words: for example, replacing common abbreviations in street

names (Ave, St, etc.) or titles (Ms, Dr, etc.) with a common

form. These kinds of rules are highly country- and language-

specific.

•General standardization, including converting character fields

to all uppercase and removing punctuation and digits.

Deduplication consists of removing redundant records from a

single list, that is, multiple records from the same list that refer to

the same underlying entity. After deduplication, each record in the

first list will have at most one true match in the second list and vice

versa. This simplifies the record linkage process and is necessary if

the goal of record linkage is to find the best set of one-to-one links

(as opposed to a list of all possible links). One can deduplicate a list

by applying record linkage techniques described in this chapter to

link a file to itself.

Normalization is the process of ensuring that the fields that are

being compared across files are as similar as possible in the sense

that they could have been generated by the same process. At min-

imum, the same standardization rules should be applied to both

files. For additional examples, consider a salary field in a survey.

There are number different ways that salary could be recorded: it

might be truncated as a privacy-preserving measure or rounded to

the nearest thousand, and missing values could be imputed with

the mean or with zero. During normalization we take note of exactly

how fields are recorded.

1.5 New tools for new data

The new data sources that we have discussed frequently require

working at scales for which the social scientist’s familiar tools are

not designed. Fortunately, the wider research and data analytics

community has developed a wide variety of often more scalable and

flexible tools—tools that we will introduce within this book.

Relational database management systems (DBMSs) are used ◮This topic is discussed in

more detail in Chapter 4.

throughout business as well as the sciences to organize, process,

10 1. Introduction

and search large collections of structured data. NoSQL DBMSs are

used for data that is extremely large and/or unstructured, such as

collections of web pages, social media data (e.g., Twitter messages),

and clinical notes. Extensions to these systems and also special-

ized single-purpose DBMSs provide support for data types that are

not easily handled in statistical packages such as geospatial data,

networks, and graphs.

Open source programming systems such as Python (used ex-

tensively throughout this book) and R provide high-quality imple-

mentations of numerous data analysis and visualization methods,

from regression to statistics, text analysis, network analysis, and

much more. Finally, parallel computing systems such as Hadoop

and Spark can be used to harness parallel computer clusters for

extremely large data sets and computationally intensive analyses.

These various components may not always work together as

smoothly as do integrated packages such as SAS, SPSS, and Stata,

but they allow researchers to take on problems of great scale and

complexity. Furthermore, they are developing at a tremendous rate

as the result of work by thousands of people worldwide. For these

reasons, the modern social scientist needs to be familiar with their

characteristics and capabilities.

1.6 The book’s “use case”

This book is about the uses of big data in social science. Our focus

is on working through the use of data as a social scientist normally

approaches research. That involves thinking through how to use

such data to address a question from beginning to end, and thereby

learning about the associated tools—rather than simply engaging in

coding exercises and then thinking about how to apply them to a

potpourri of social science examples.

There are many examples of the use of big data in social science

research, but relatively few that feature all the different aspects

that are covered in this book. As a result, the chapters in the book

draw heavily on a use case based on one of the first large-scale big

data social science data infrastructures. This infrastructure, based

on UMETRICS*data housed at the University of Michigan’s Insti-

⋆UMETRICS: Universi-

ties Measuring the Impact

of Research on Innovation

and Science [228]

tute for Research on Innovation and Science (IRIS) and enhanced

◮iris.isr.umich.edu with data from the US Census Bureau, provides a new quantitative

analysis and understanding of science policy based on large-scale

computational analysis of new types of data.

1.6. The book’s “use case” 11

The infrastructure was developed in response to a call from the

President’s Science Advisor (Jack Marburger) for a science of science

policy [250]. He wanted a scientific response to the questions that

he was asked about the impact of investments in science.

Example: The Science of Science Policy

Marburger wrote [250]: “How much should a nation spend on science? What

kind of science? How much from private versus public sectors? Does demand for

funding by potential science performers imply a shortage of funding or a surfeit

of performers? These and related science policy questions tend to be asked and

answered today in a highly visible advocacy context that makes assumptions that

are deserving of closer scrutiny. A new ‘science of science policy’ is emerging, and

it may offer more compelling guidance for policy decisions and for more credible

advocacy. . . .

“Relating R&D to innovation in any but a general way is a tall order, but not

a hopeless one. We need econometric models that encompass enough variables

in a sufficient number of countries to produce reasonable simulations of the effect

of specific policy choices. This need won’t be satisfied by a few grants or work-

shops, but demands the attention of a specialist scholarly community. As more

economists and social scientists turn to these issues, the effectiveness of science

policy will grow, and of science advocacy too.”

Responding to this policy imperative is a tall order, because it in-

volves using all the social science and computer science tools avail-

able to researchers. The new digital technologies can be used to

capture the links between the inputs into research, the way in which

those inputs are organized, and the subsequent outputs [396,415].

The social science questions that are addressable with this data in-

frastructure include the effect of research training on the placement

and earnings of doctoral recipients, how university trained scien-

tists and engineers affect the productivity of the firms they work for,

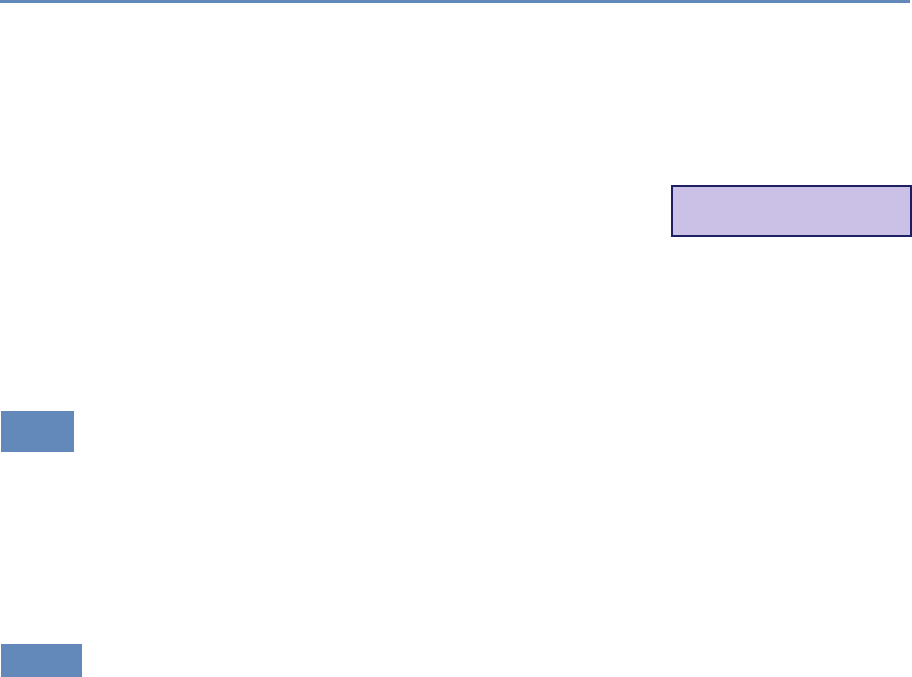

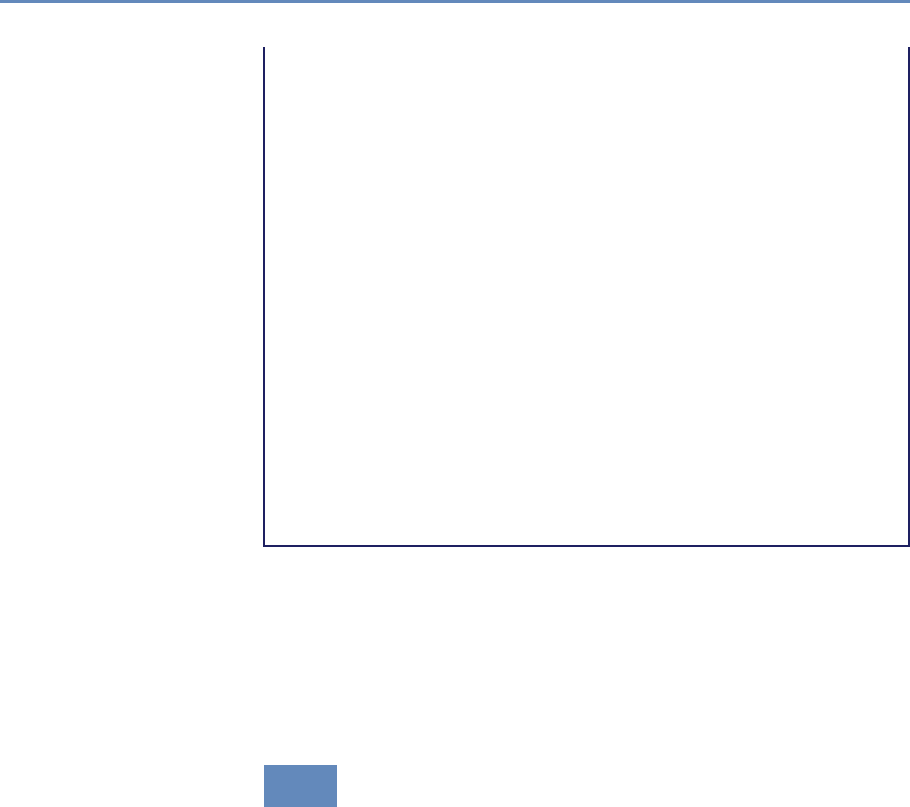

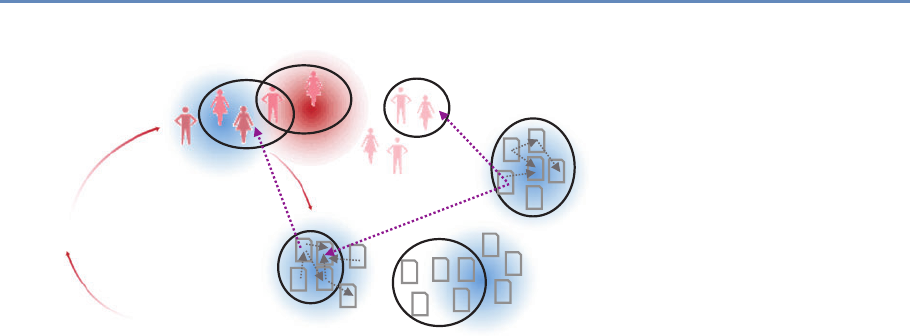

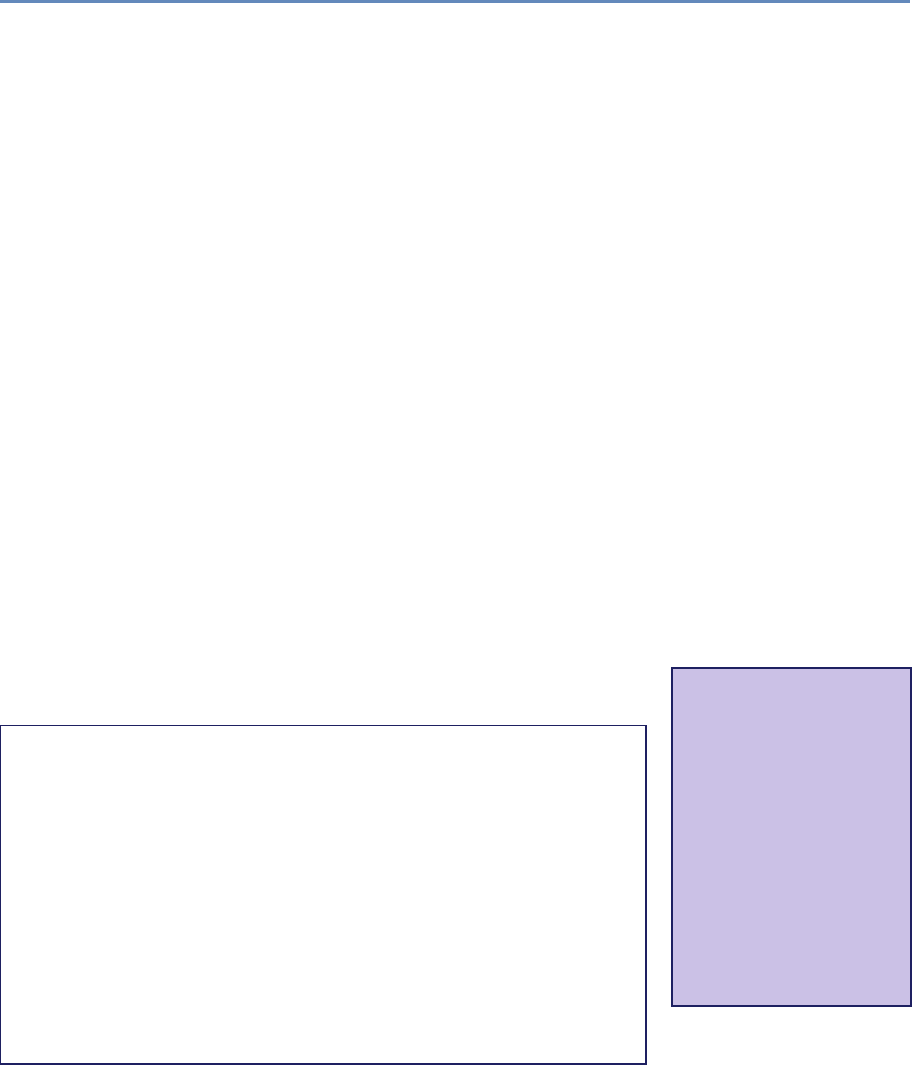

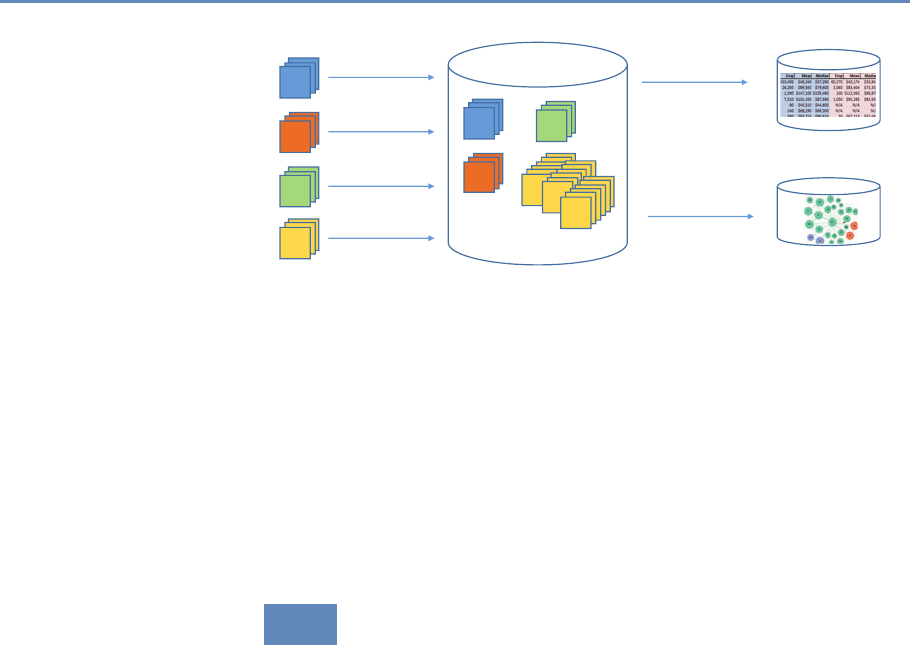

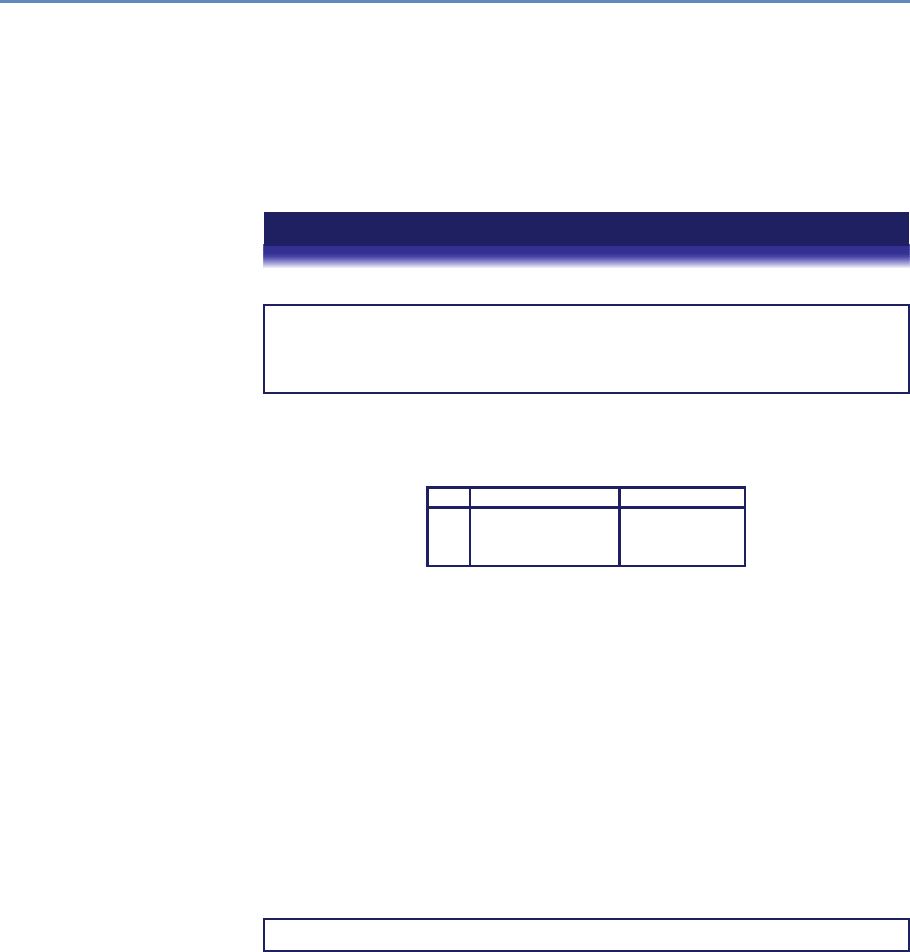

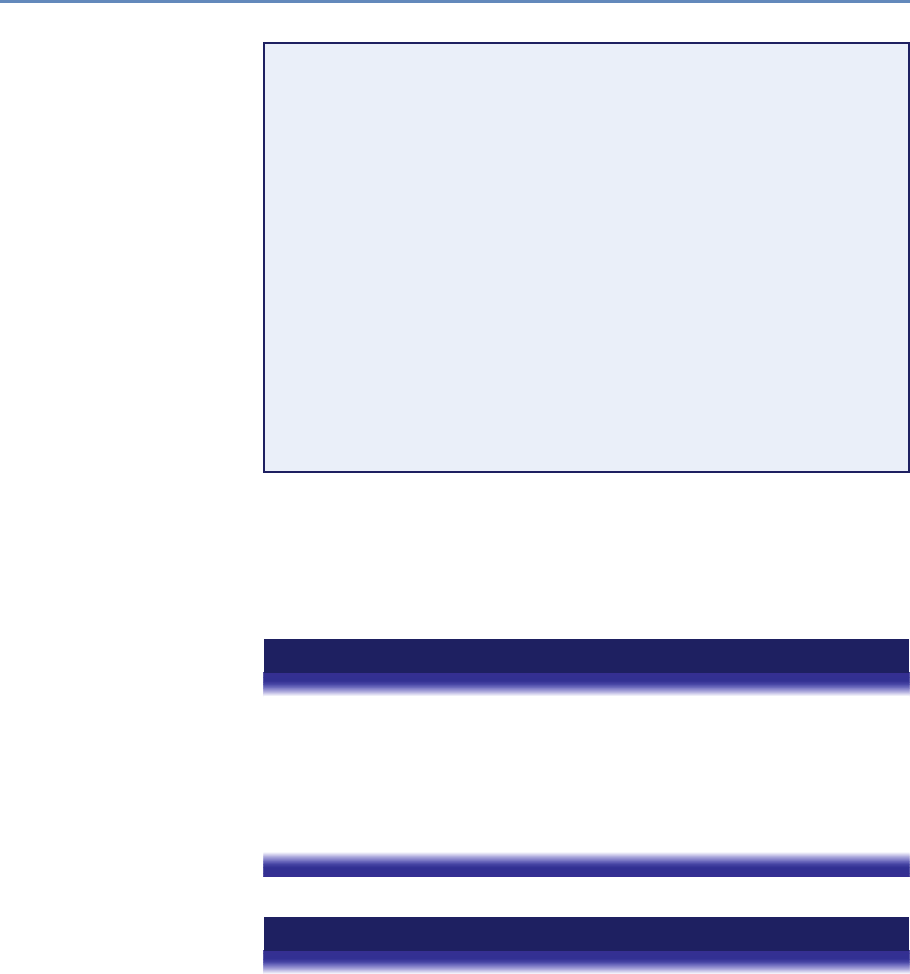

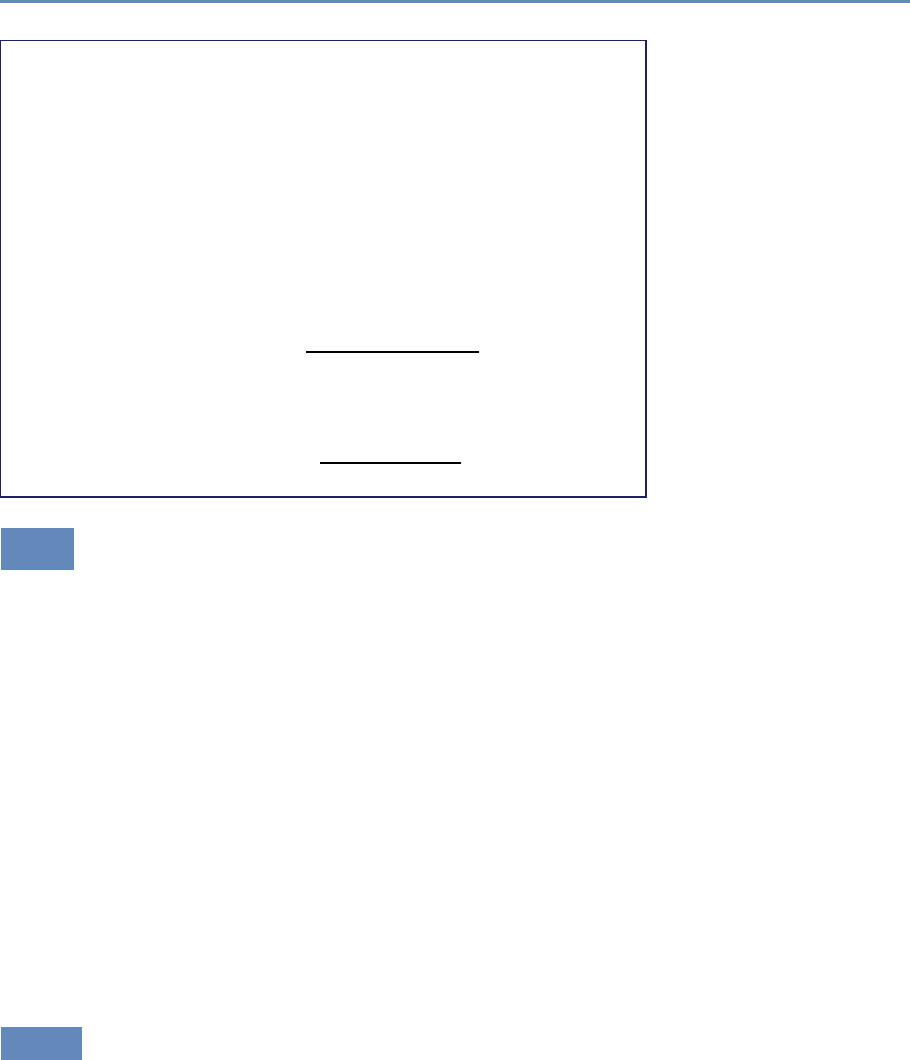

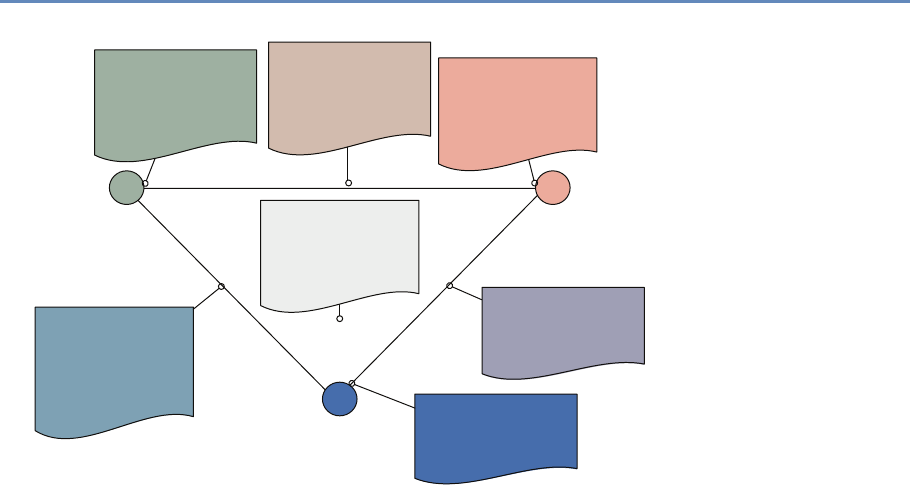

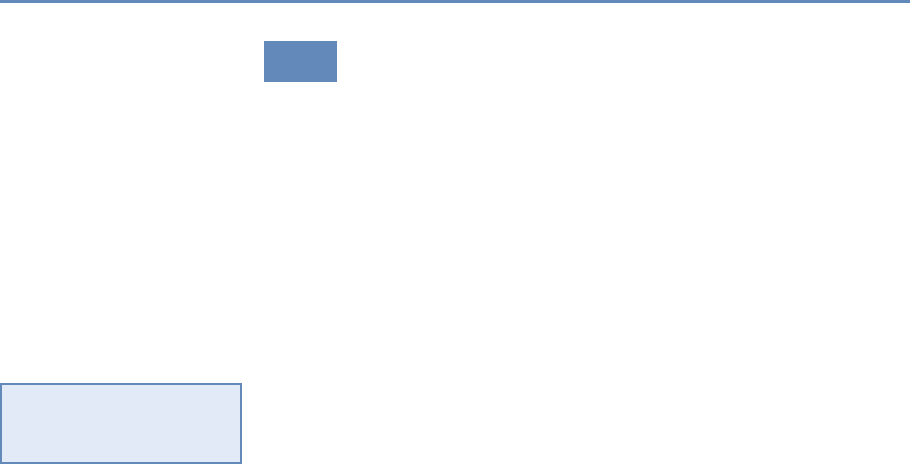

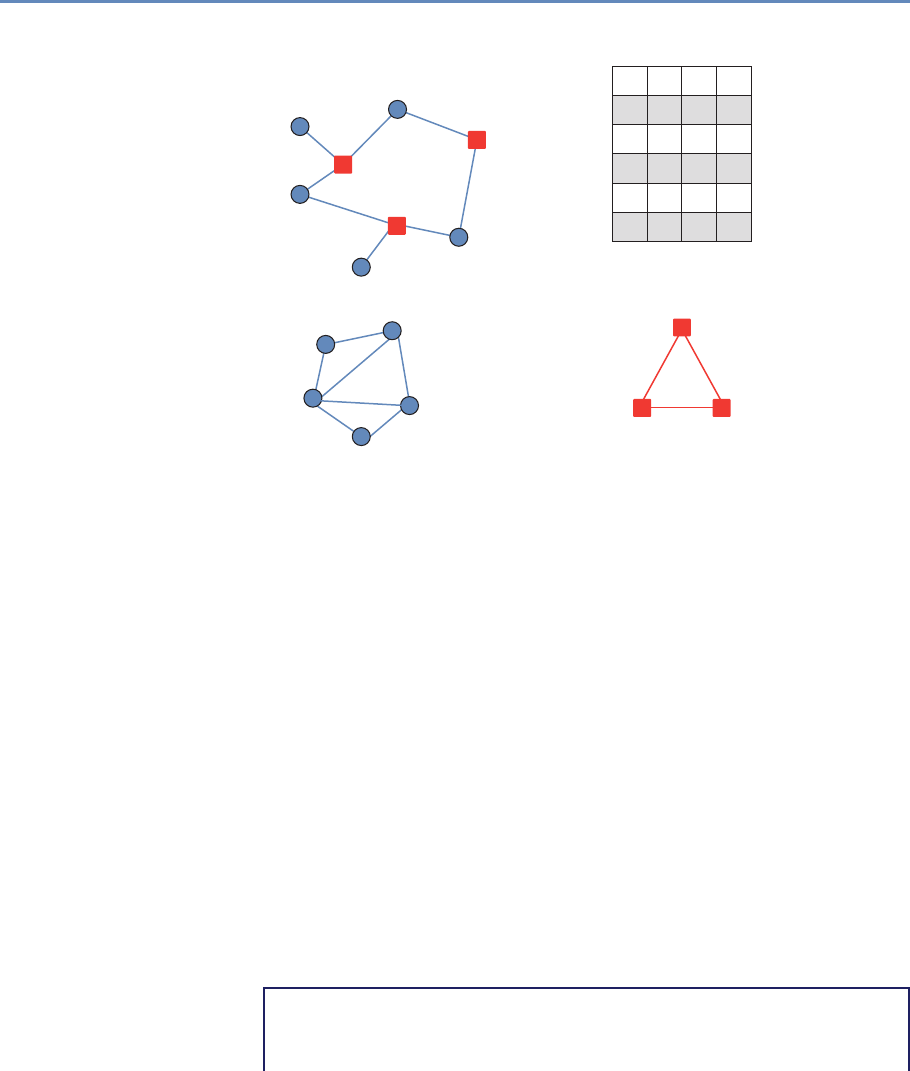

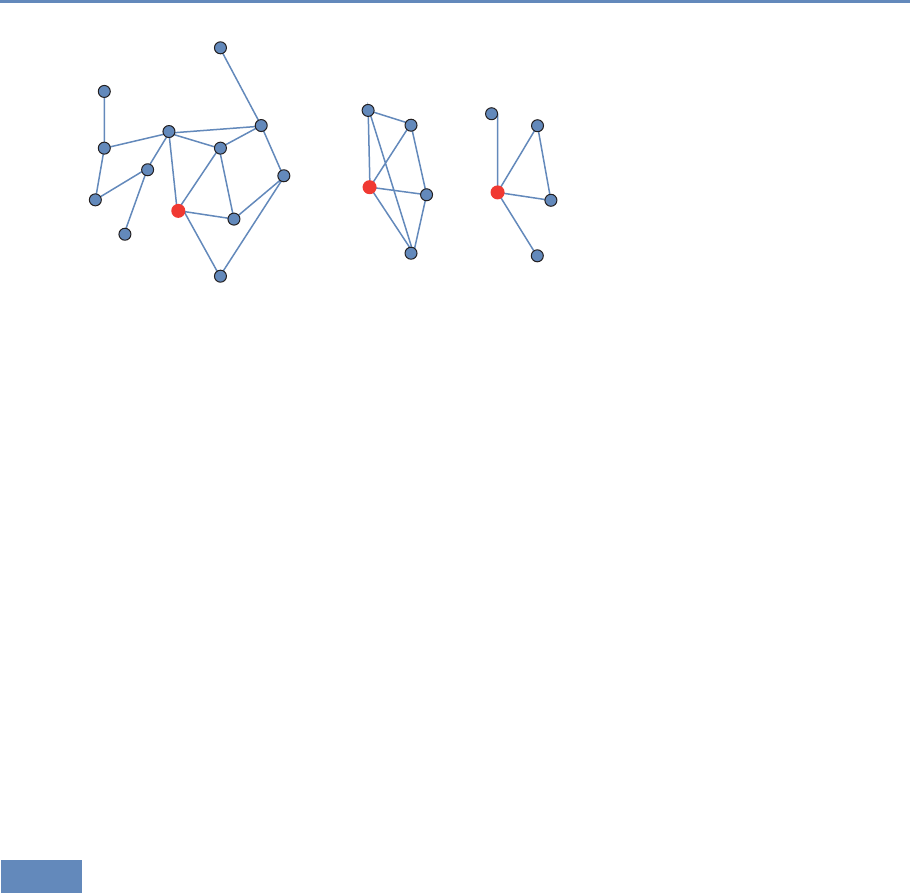

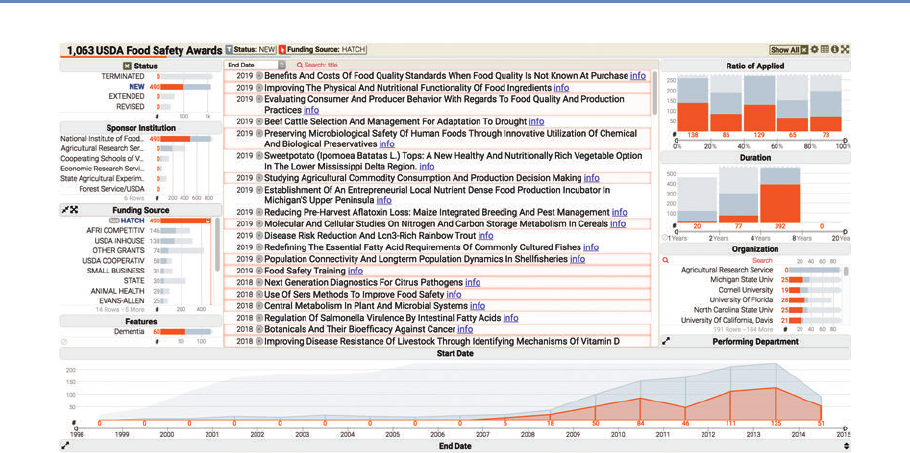

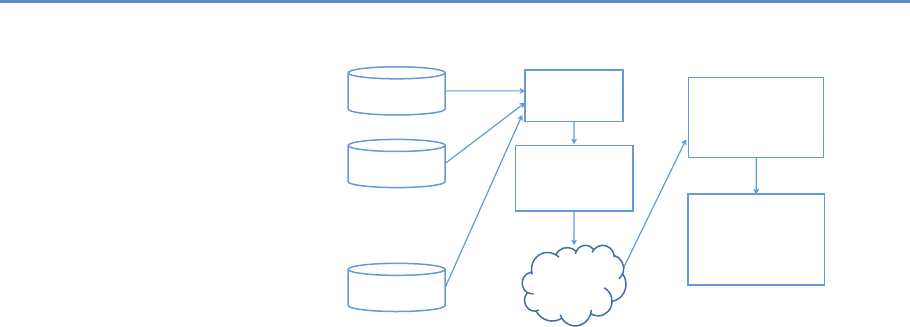

and the return on investments in research. Figure 1.2 provides an

abstract representation of the empirical approach that is needed:

data about grants, the people who are funded on grants, and the

subsequent scientific and economic activities.

First, data must be captured on what is funded, and since the

data are in text format, computational linguistics tools must be

applied (Chapter 7). Second, data must be captured on who is

funded, and how they interact in teams, so network tools and ana-

lysis must be used (Chapter 8). Third, information about the type of

results must be gleaned from the web and other sources (Chapter 2).

12 1. Introduction

Co-Author Collaborate

Train

Pays for &

Is Awarded to

People

Funding

Institutions Products

E

m

p

l

o

y

A

w

a

r

d

e

d

t

o

S

u

p

p

o

r

t

s

P

r

o

d

u

c

e

&

u

s

e

Figure 1.2. A visualization of the complex links between what and who is funded, and the results; tracing the direct

link between funding and results is misleading and wrong

Finally, the disparate complex data sets need to be stored in data-

bases (Chapter 4), integrated (Chapter 3), analyzed (Chapter 6), and

used to make inferences (Chapter 10).

The use case serves as the thread that ties many of the ideas

together. Rather than asking the reader to learn how to code “hello

world,” we build on data that have been put together to answer a

real-world question, and provide explicit examples based on that

data. We then provide examples that show how the approach gen-

eralizes.

For example, the text analysis chapter (Chapter 7) shows how

to use natural language processing to describe what research is

being done, using proposal and award text to identify the research

topics in a portfolio [110, 368]. But then it also shows how the

approach can be used to address a problem that is not just limited

to science policy—the conversion of massive amounts of knowledge

that is stored in text to usable information.

1.7. The structure of the book 13

Similarly, the network analysis chapter (Chapter 8) gives specific

examples using the UMETRICS data and shows how such data can

be used to create new units of analysis—the networks of researchers

who do science, and the networks of vendors who supply research

inputs. It also shows how networks can be used to study a wide

variety of other social science questions.

In another example, we use APIs*provided by publishers to de- ⋆Application Programming

Interfaces

scribe the results generated by research funding in terms of pub-

lications and other measures of scientific impact, but also provide

code that can be repurposed for many similar APIs.

And, of course, since all these new types of data are provided

in a variety of different formats, some of which are quite large (or

voluminous), and with a variety of different timestamps (or velocity),

we discuss how to store the data in different types of data formats.

1.7 The structure of the book

We organize the book in three parts, based around the way social

scientists approach doing research. The first set of chapters ad-

dresses the new ways to capture, curate, and store data. The sec-

ond set of chapters describes what tools are available to process and

classify data. The last set deals with analysis and the appropriate

handling of data on individuals and organizations.

1.7.1 Part I: Capture and curation

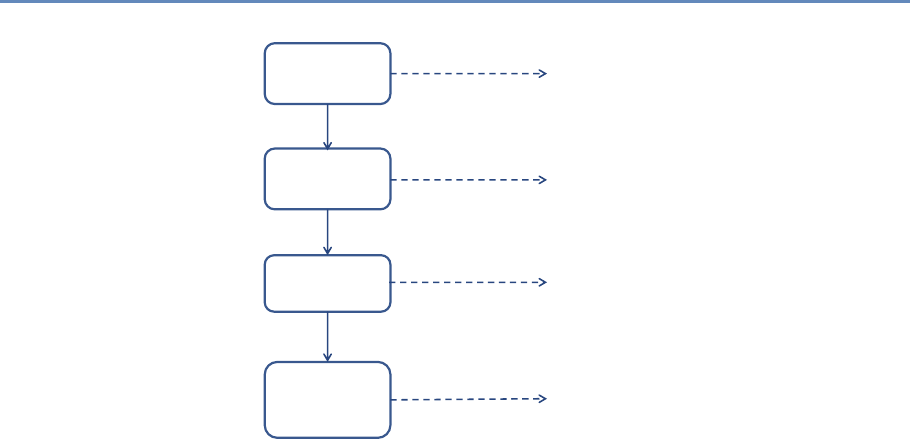

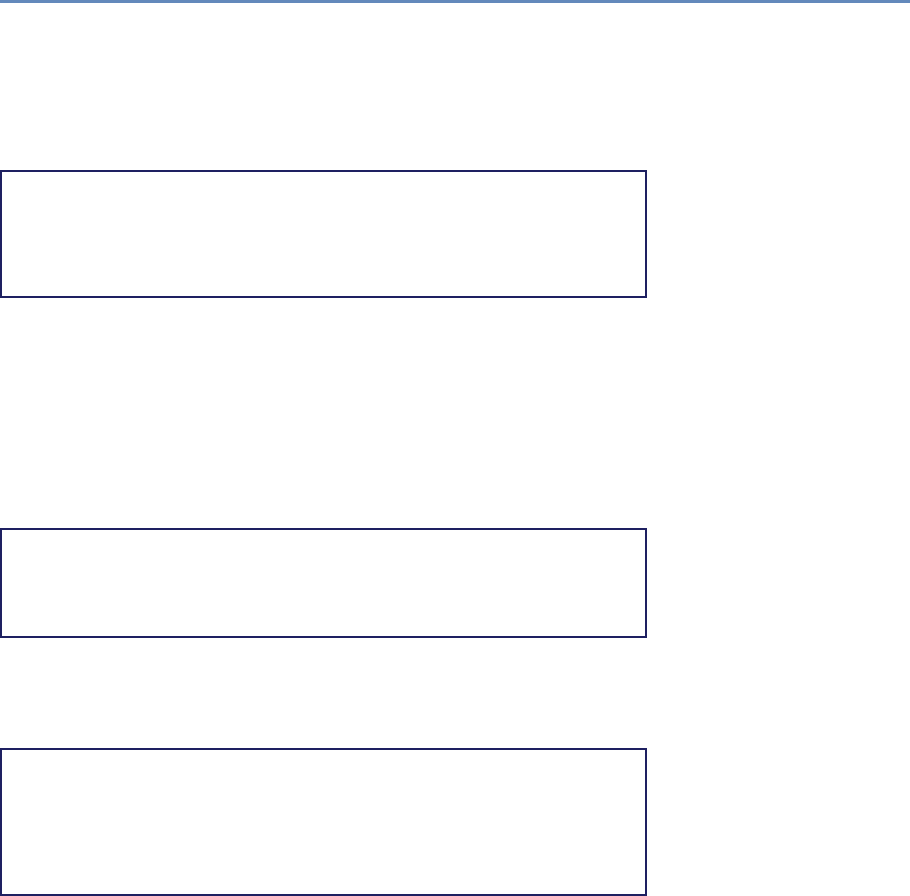

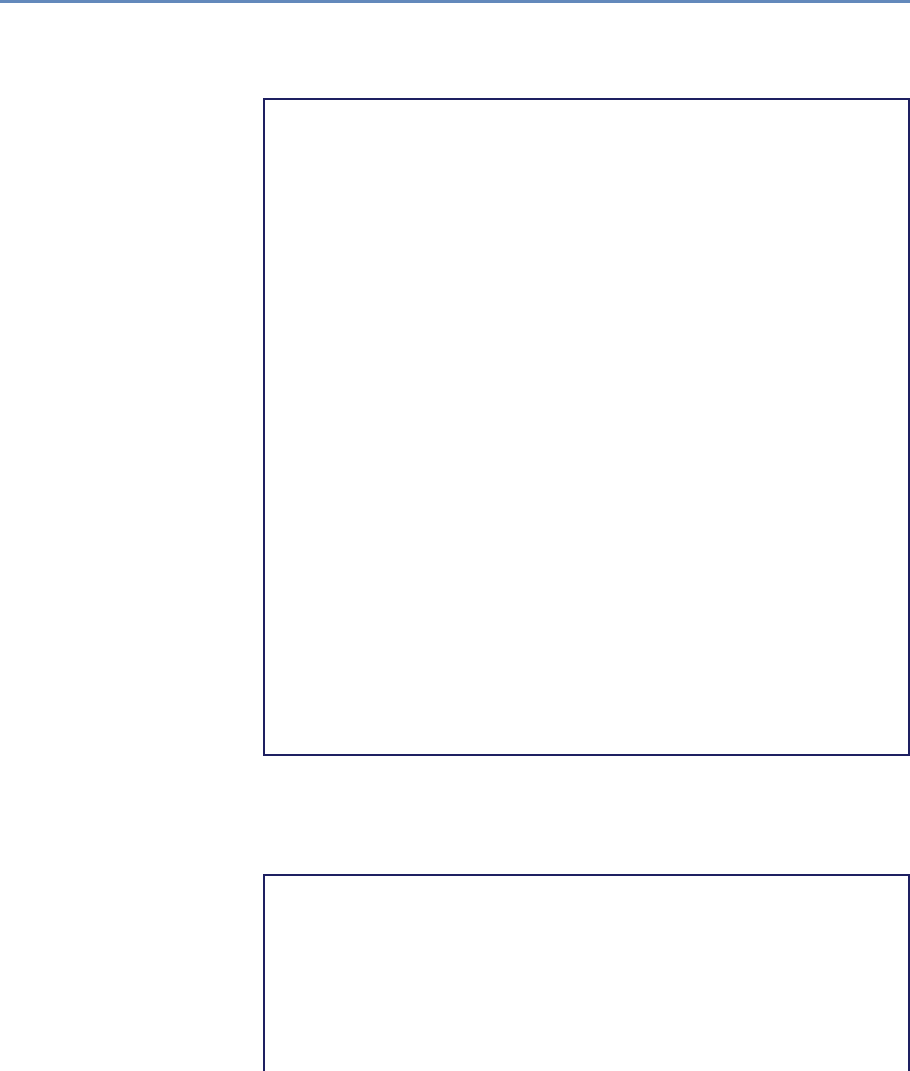

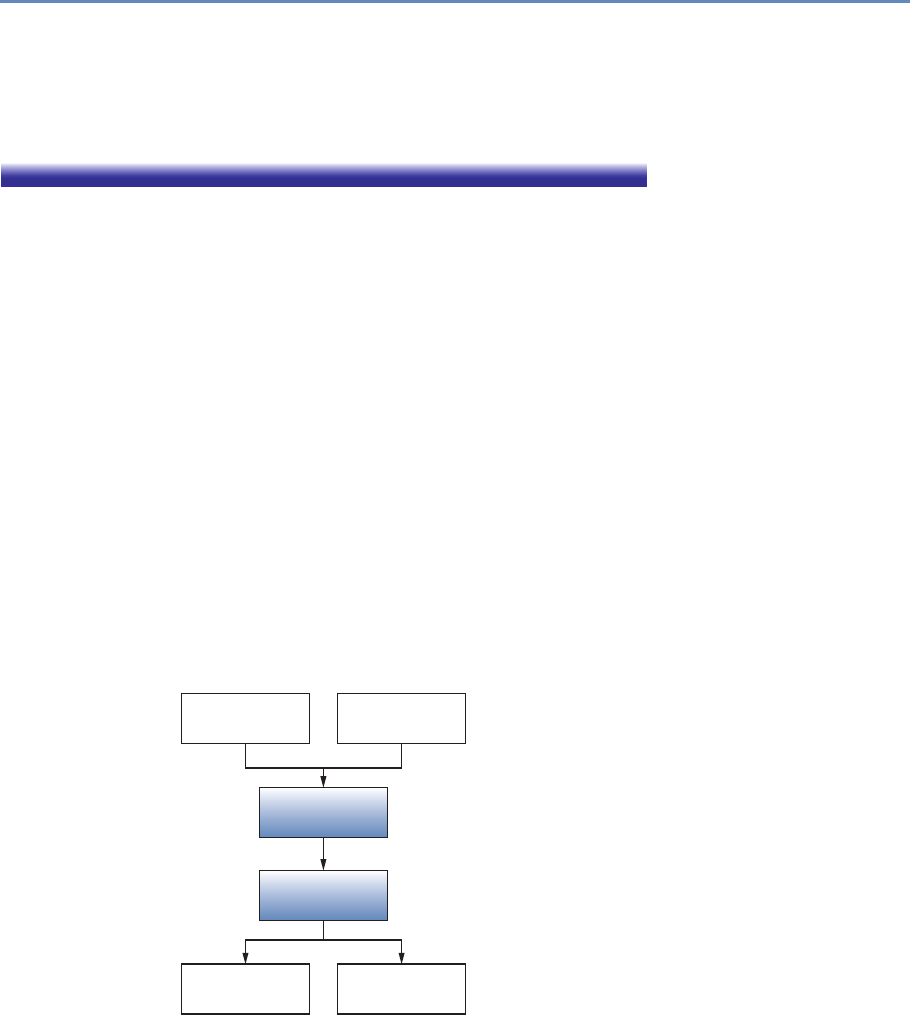

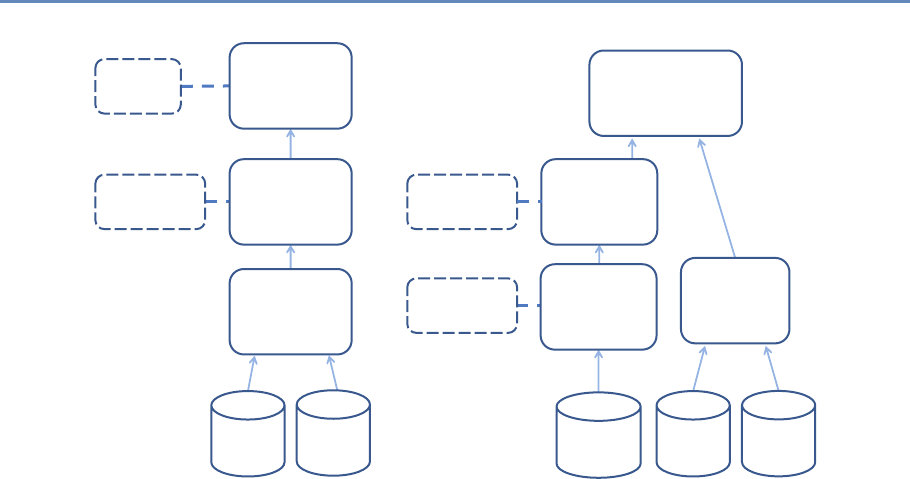

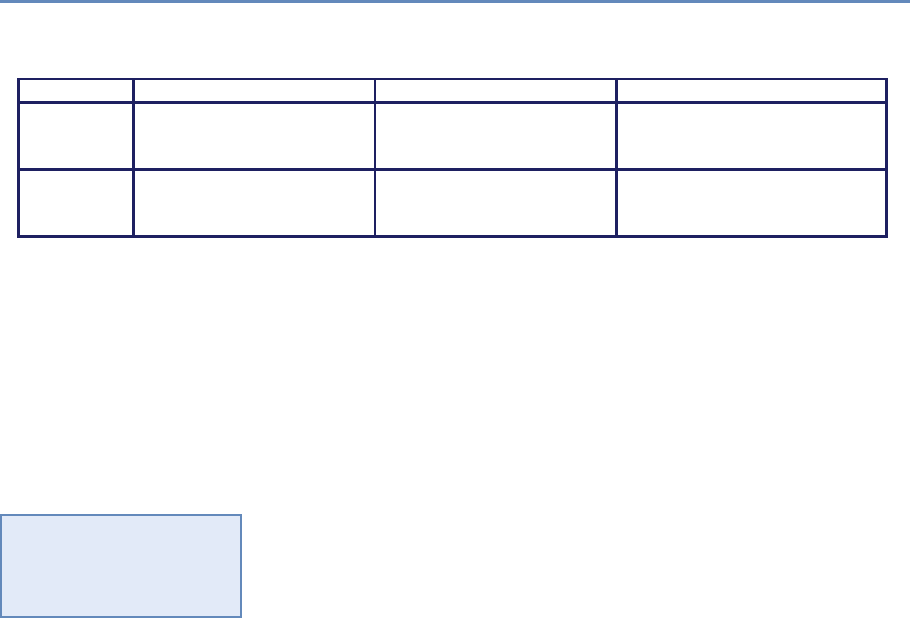

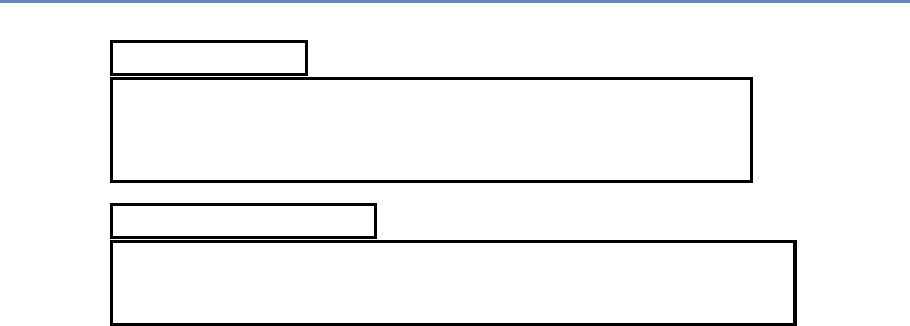

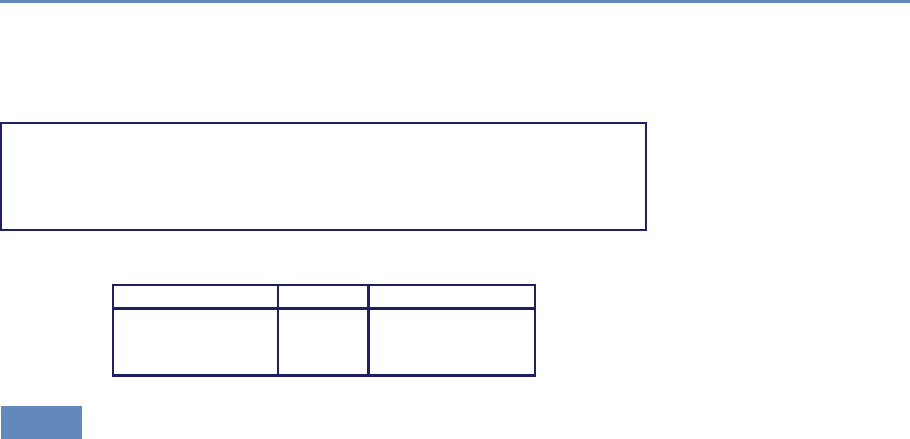

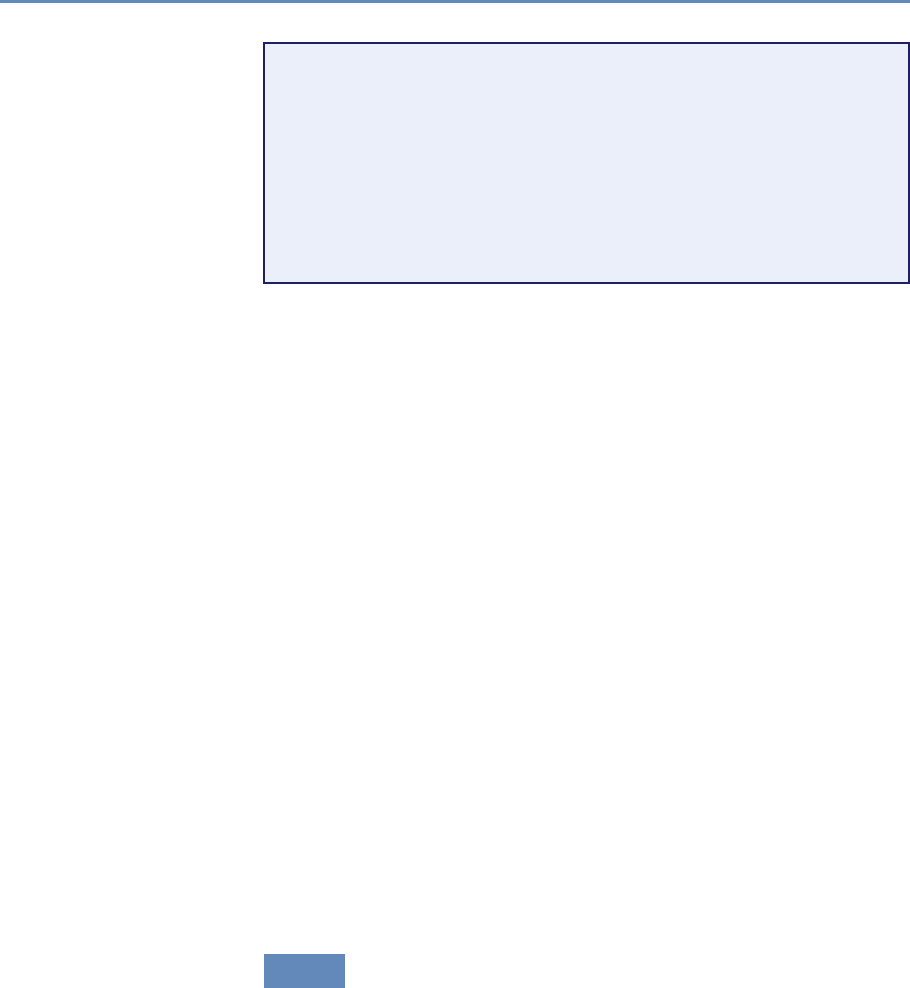

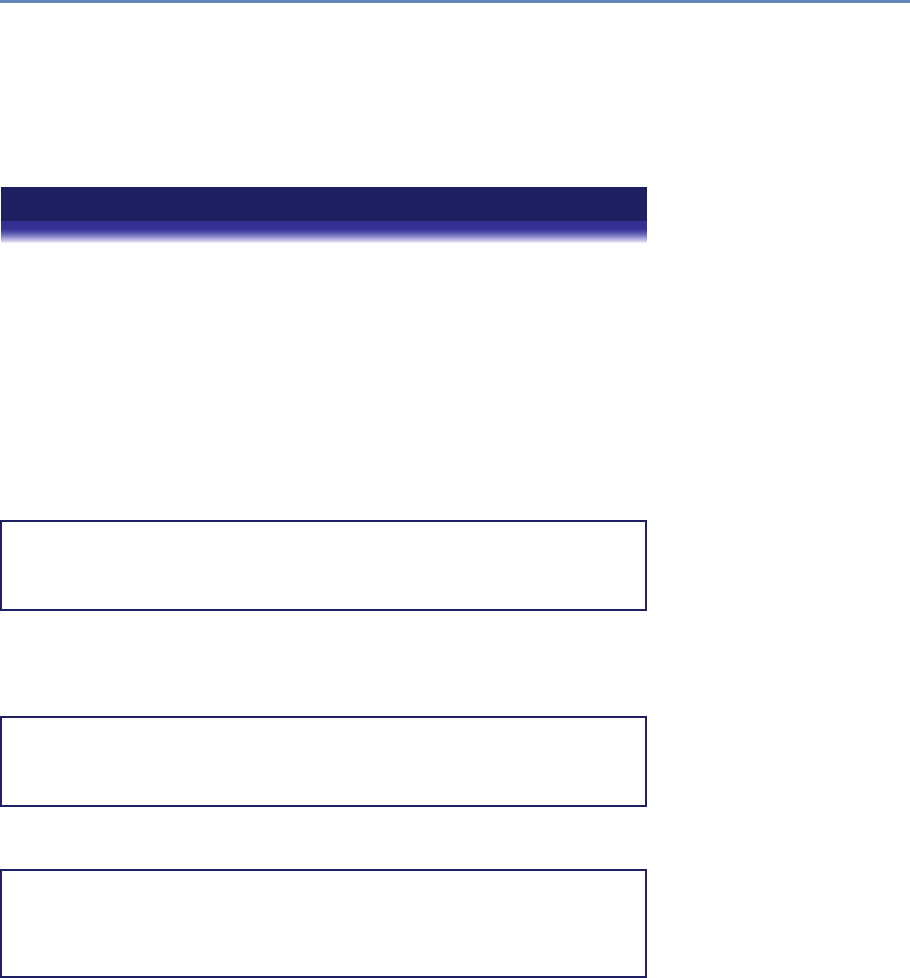

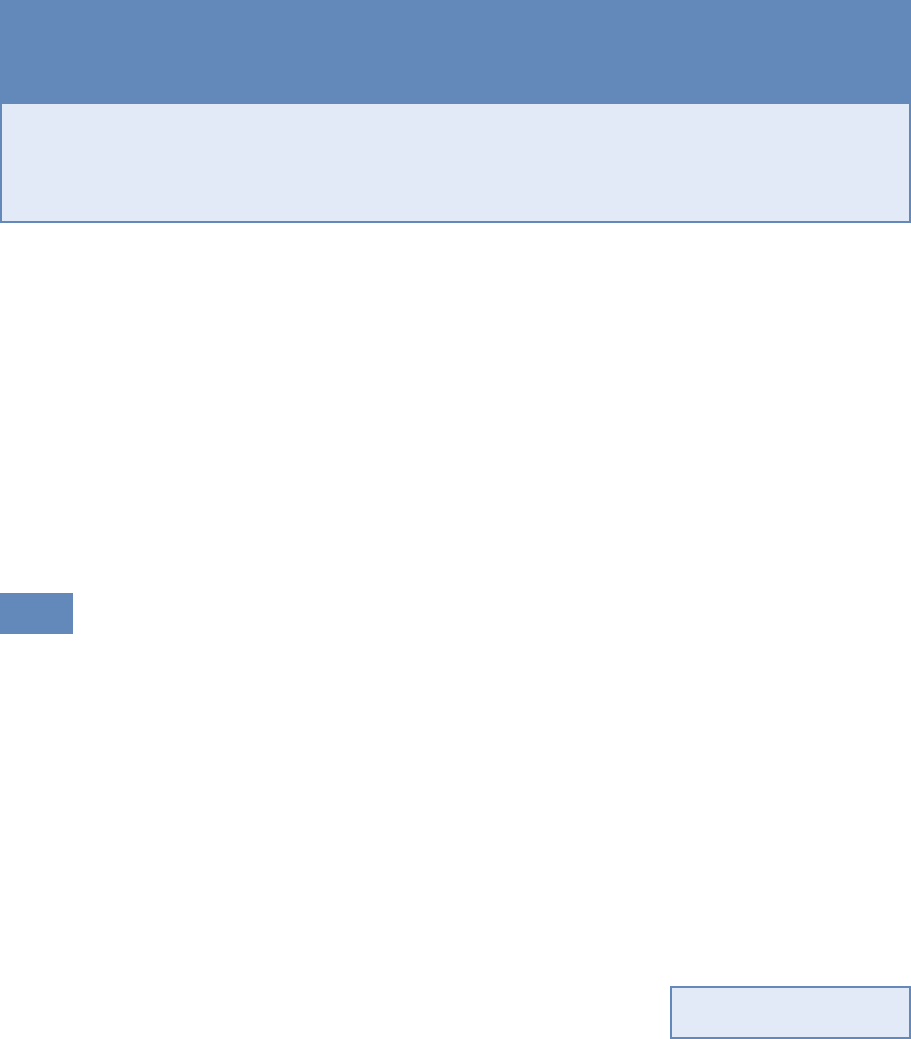

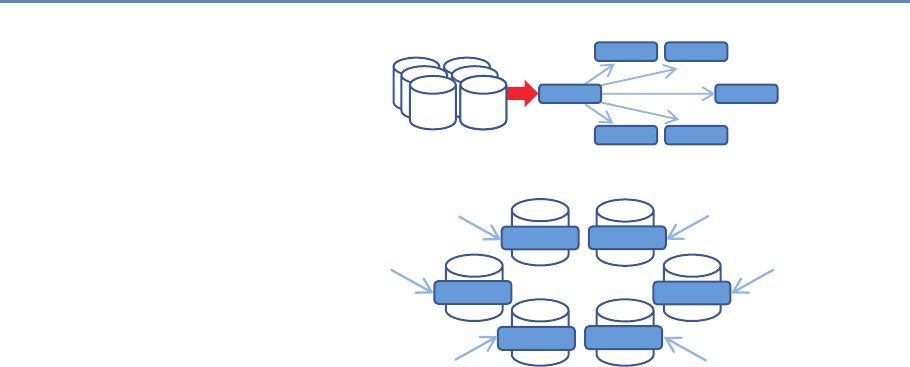

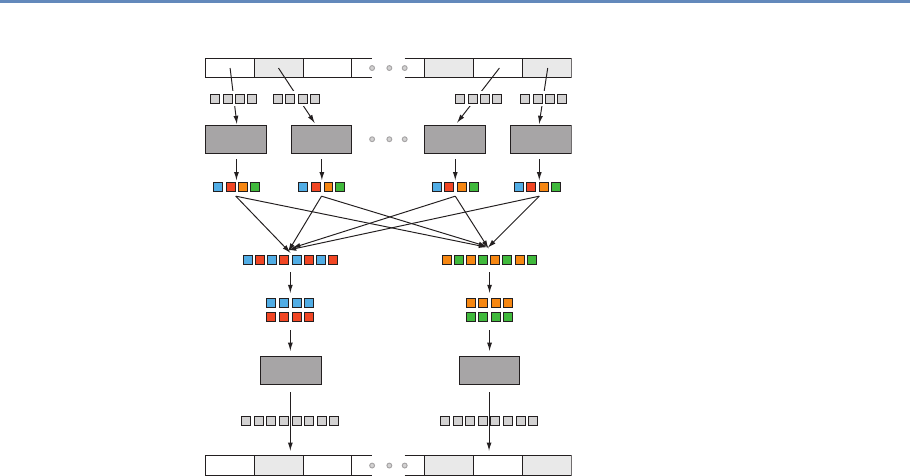

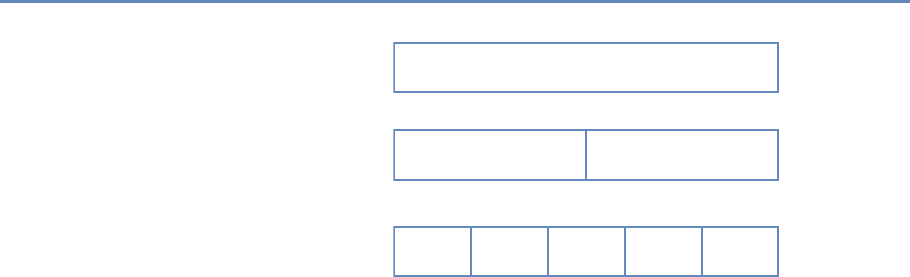

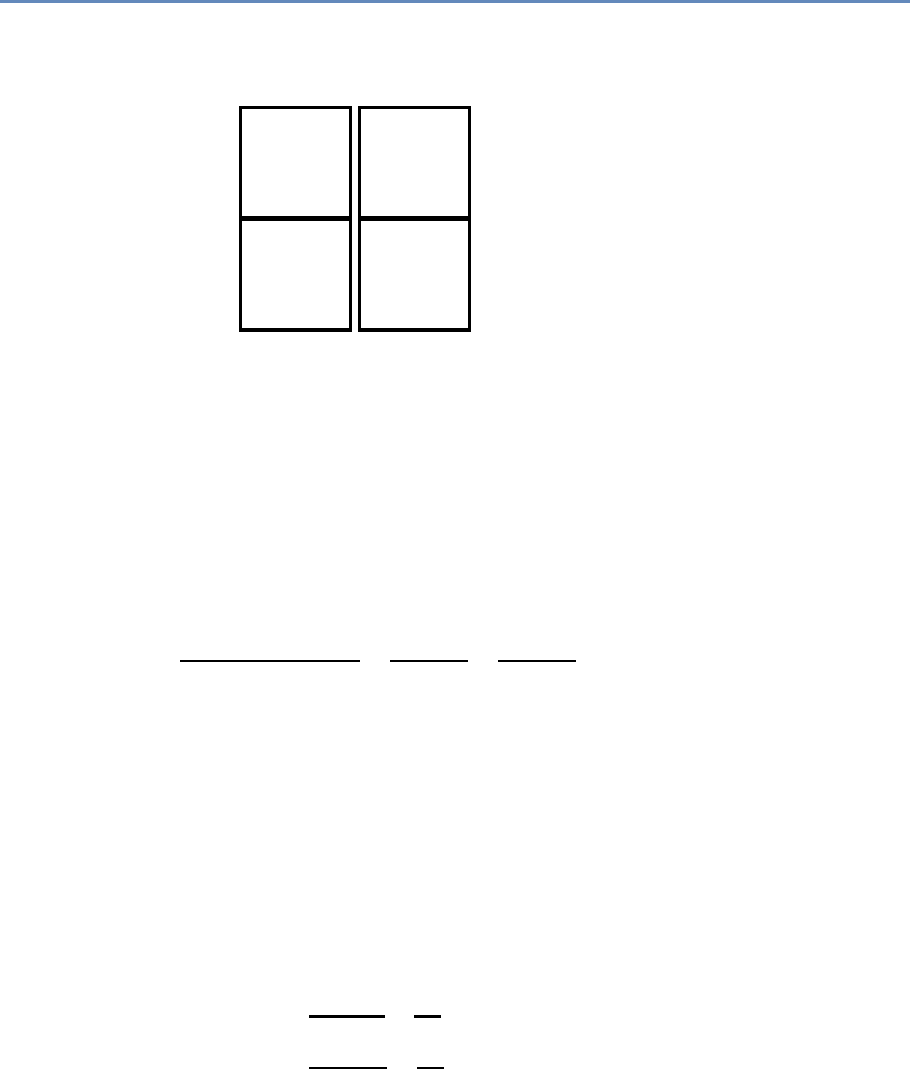

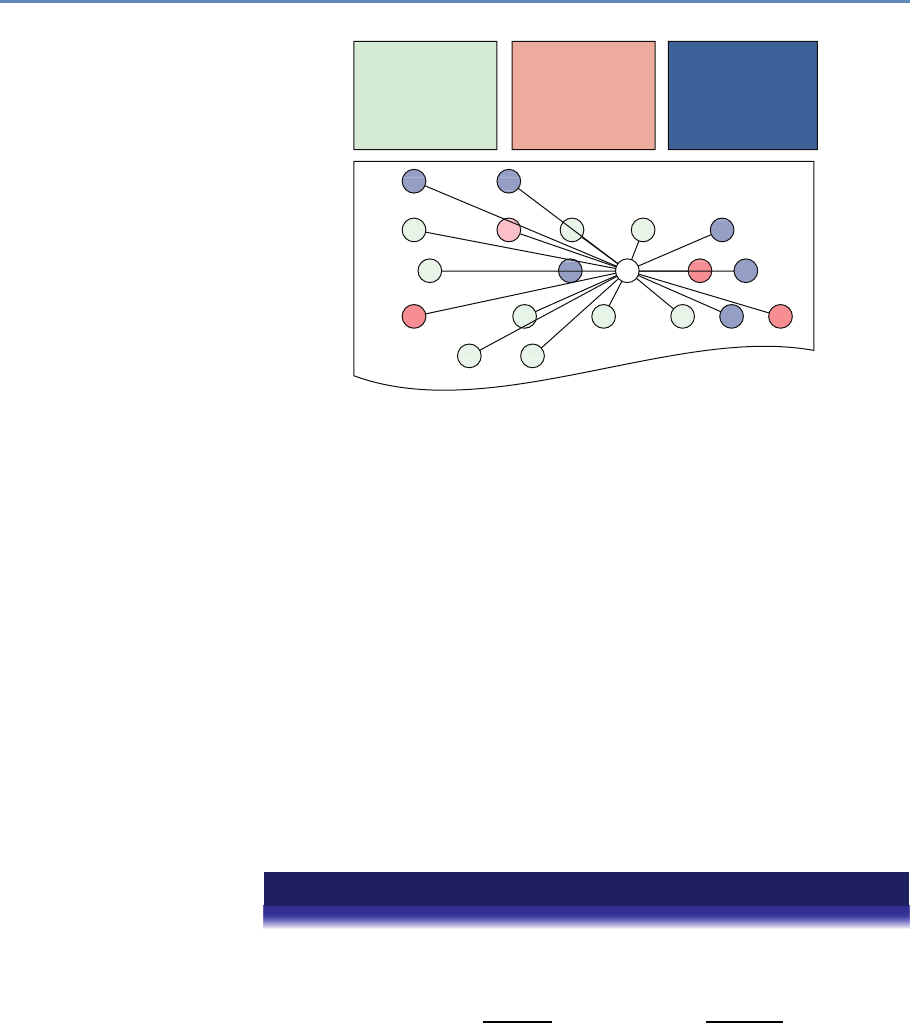

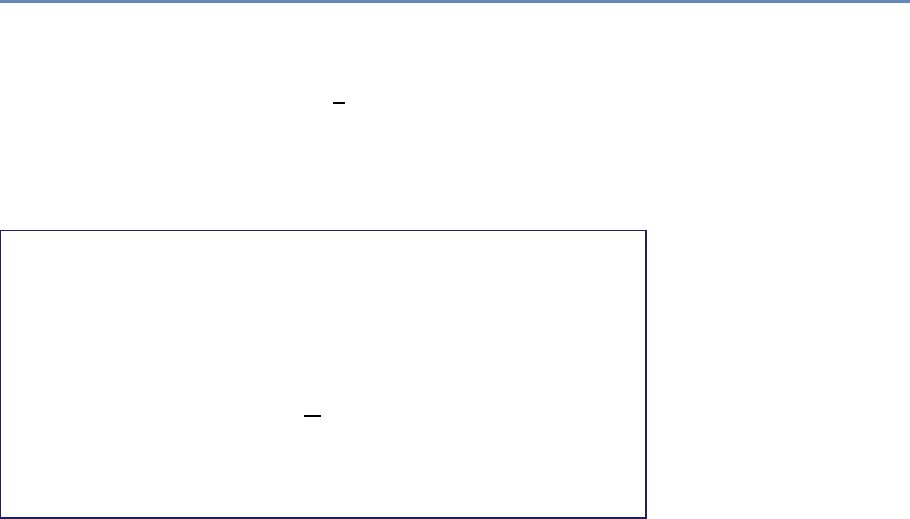

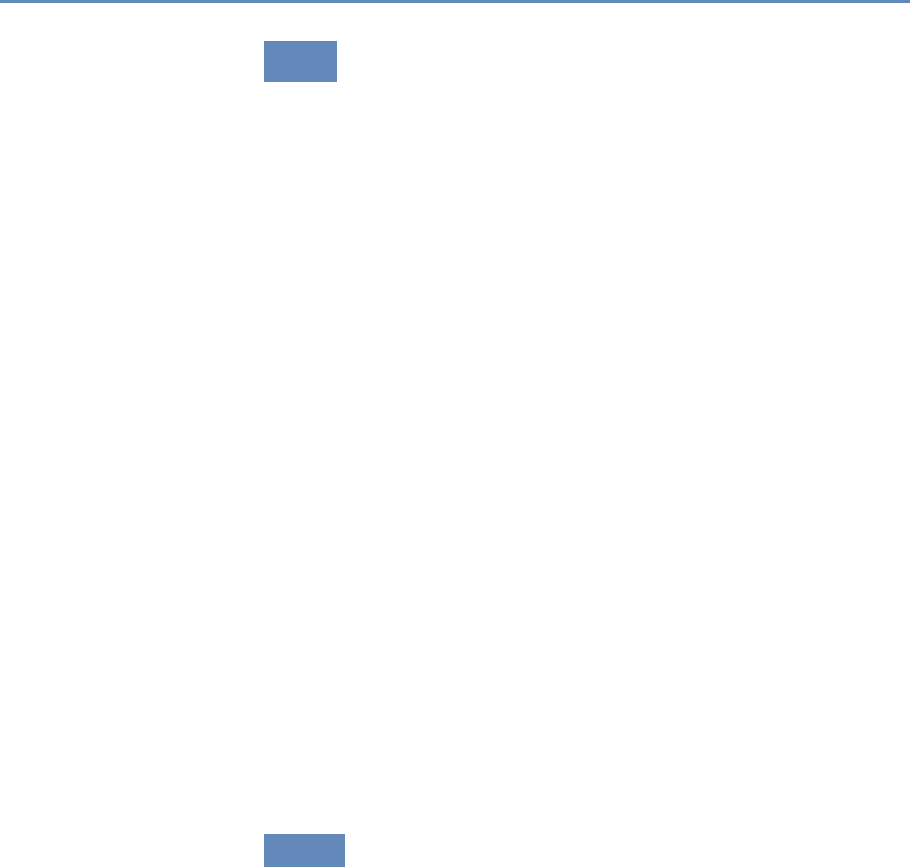

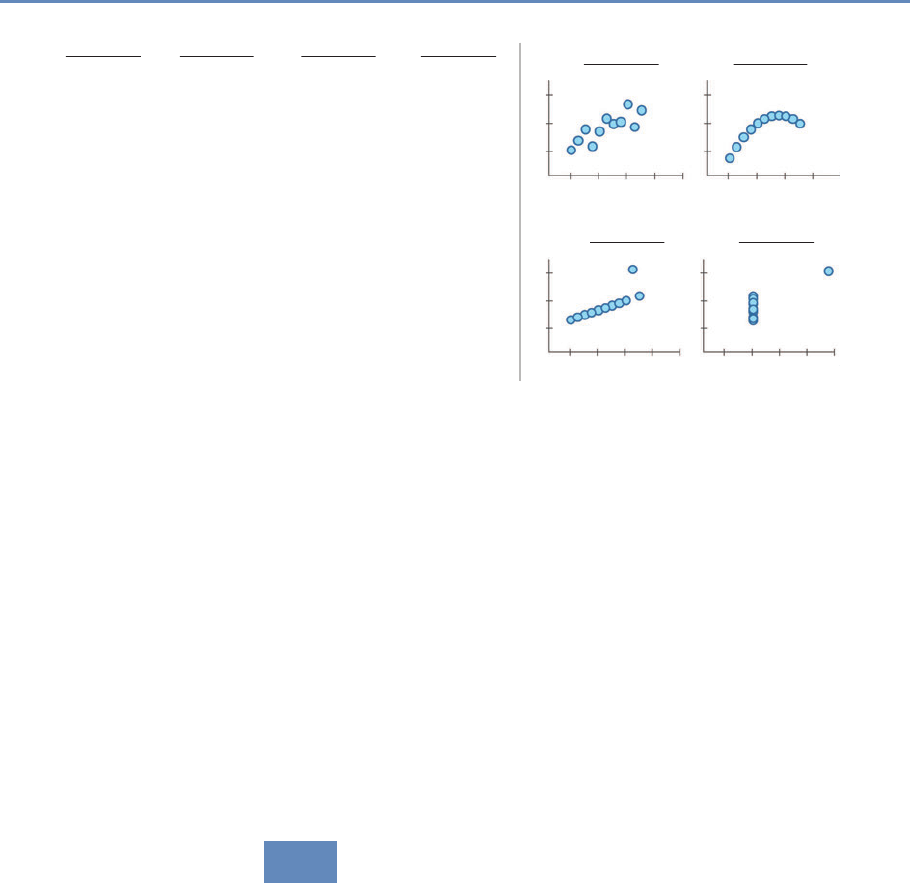

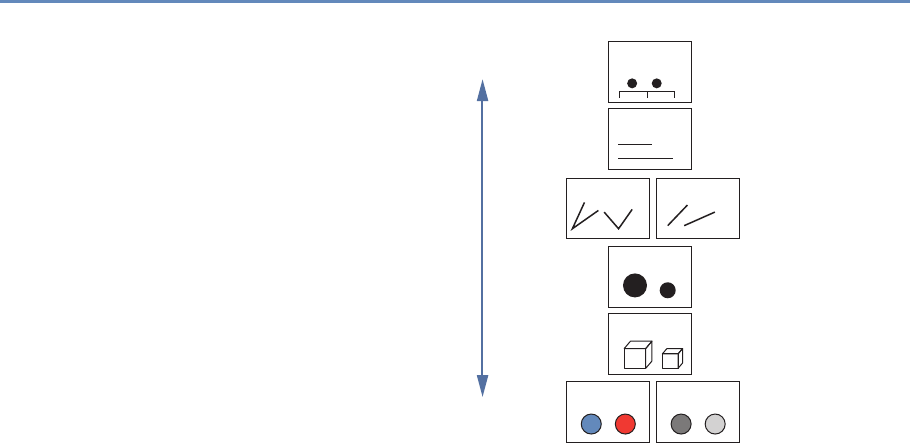

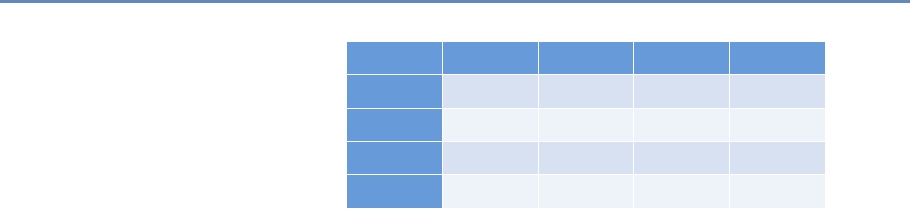

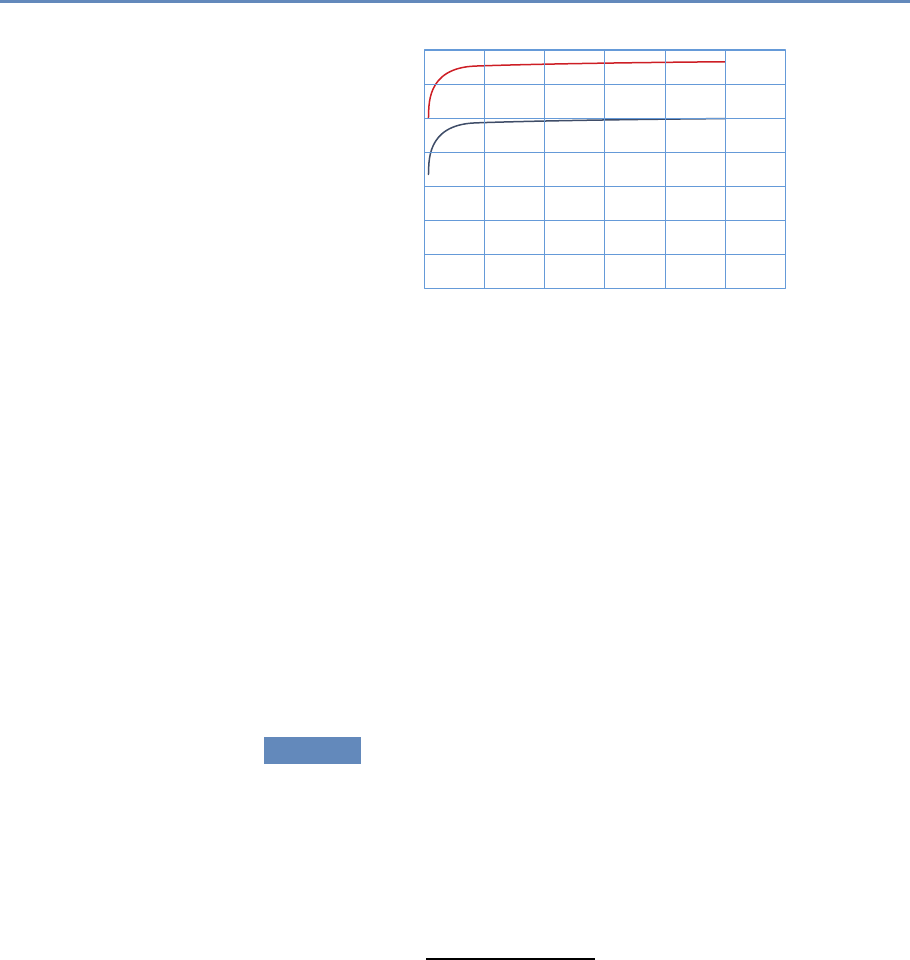

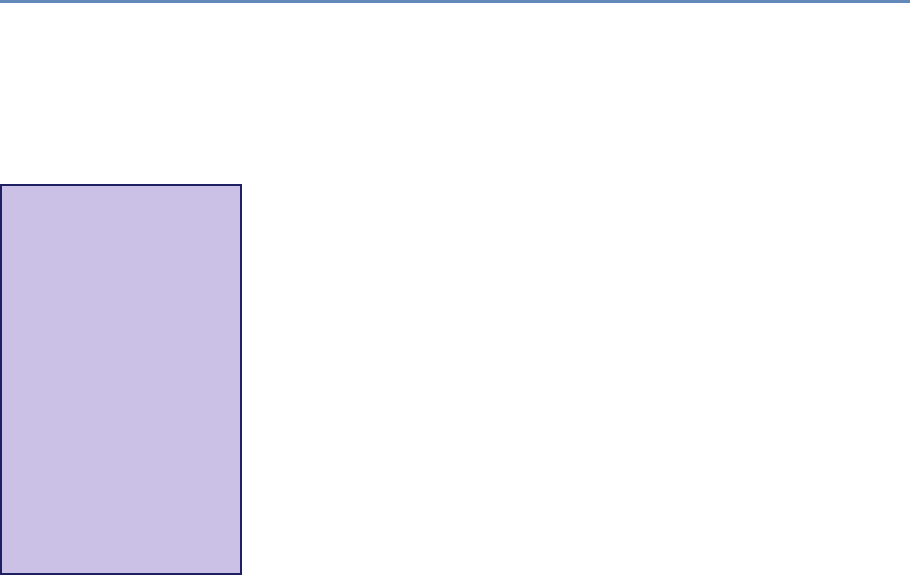

The four chapters in Part I (see Figure 1.3) tell you how to capture

and manage data.

Chapter 2 describes how to extract information from social me-

dia about the transmission of knowledge. The particular applica-

tion will be to develop links to authors’ articles on Twitter using

PLOS articles and to pull information about authors and articles

from web sources by using an API. You will learn how to retrieve

link data from bookmarking services, citations from Crossref, links

from Facebook, and information from news coverage. In keep-

ing with the social science grounding that is a core feature of the

book, the chapter discusses what data can be captured from online

sources, what is potentially reliable, and how to manage data quality

issues.

Big data differs from survey data in that we must typically com-

bine data from multiple sources to get a complete picture of the

activities of interest. Although computer scientists may sometimes

14 1. Introduction

API and Web

Scraping

Record

Linkage

Storing Data

Processing

Large

Data Sets

Chapter 2: Dierent ways of collecting data

Chapter 3: Combining dierent data sets

Chapter 4: Ingest, query and export data

Chapter 5: Output (creating innovation measures)

Figure 1.3. The four chapters of Part I focus on data capture and curation

simply “mash” data sets together, social scientists are rightfully

concerned about issues of missing links, duplicative links, and

erroneous links. Chapter 3 provides an overview of traditional

rule-based and probabilistic approaches to data linkage, as well

as the important contributions of machine learning to the linkage

problem.

Once data have been collected and linked into different files, it

is necessary to store and organize it. Social scientists are used to

working with one analytical file, often in statistical software tools

such as SAS or Stata. Chapter 4, which may be the most impor-

tant chapter in the book, describes different approaches to stor-

ing data in ways that permit rapid and reliable exploration and

analysis.

Big data is sometimes defined as data that are too big to fit onto

the analyst’s computer. Chapter 5 provides an overview of clever

programming techniques that facilitate the use of data (often using

parallel computing). While the focus is on one of the most widely

used big data programming paradigms and its most popular imple-

mentation, Apache Hadoop, the goal of the chapter is to provide a

conceptual framework to the key challenges that the approach is

designed to address.

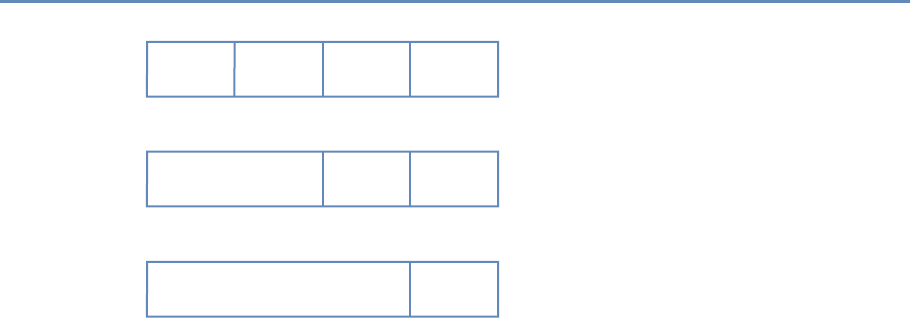

1.7. The structure of the book 15

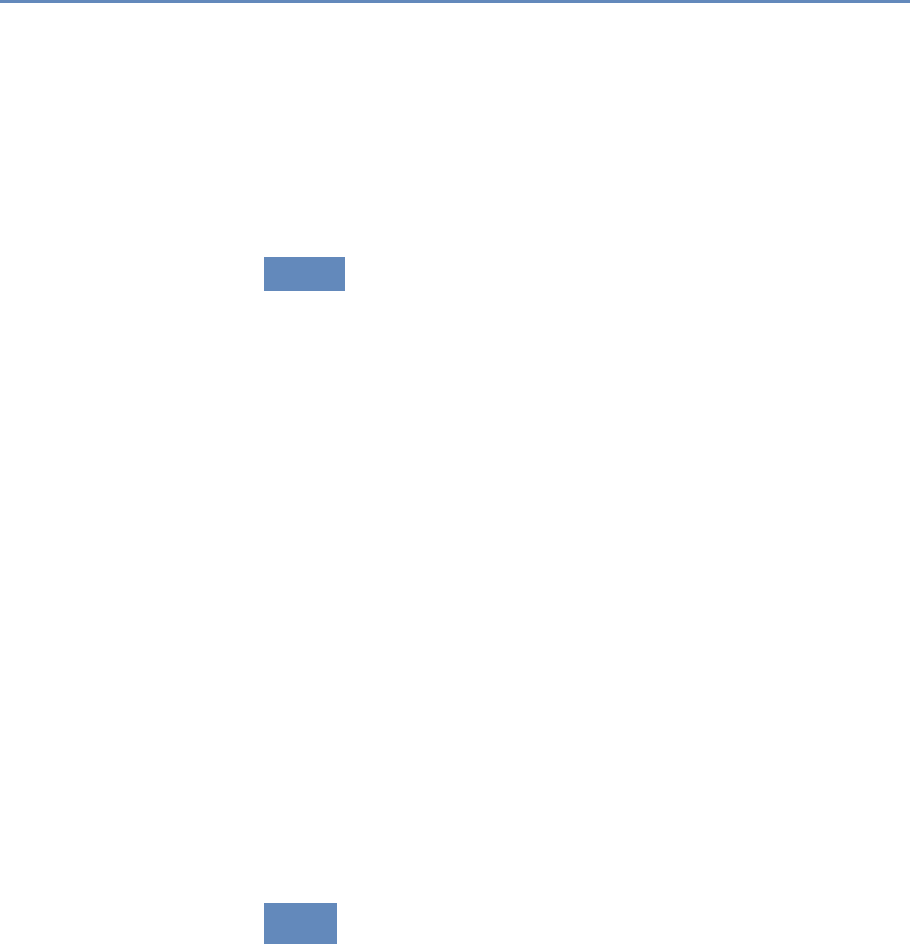

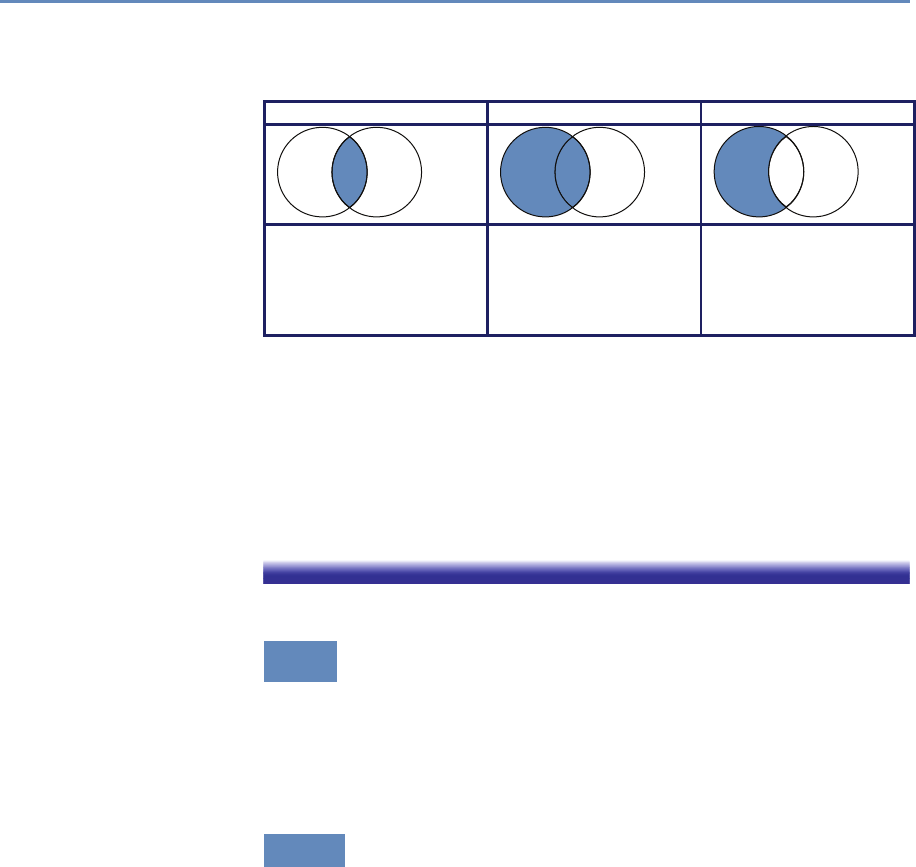

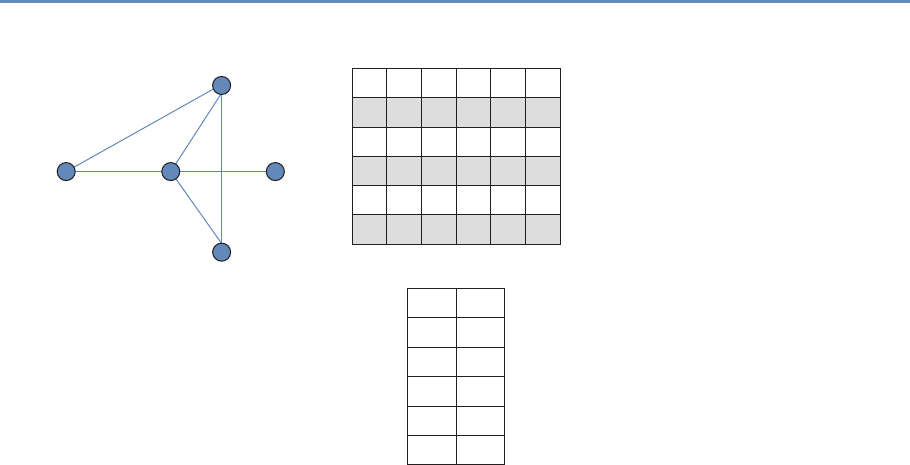

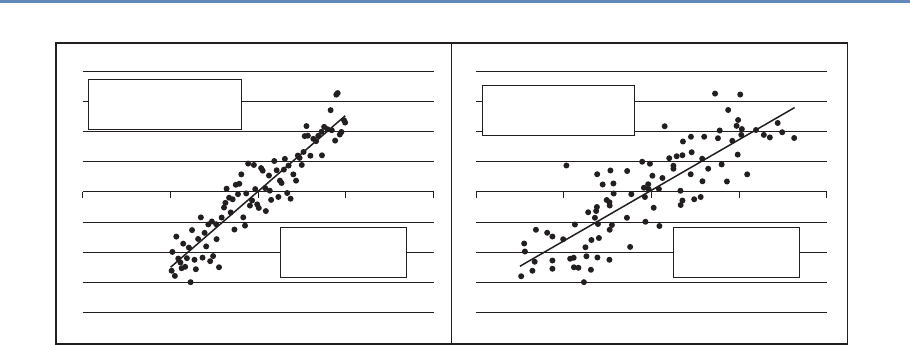

Machine

Learning

Text

Analysis

Networks

Chapter 6: Classifying data in new ways

Chapter 7: Creating new data from text

Chapter 8: Creating new measures of

social and economic activity

Figure 1.4. The four chapters in Part II focus on data modeling and analysis

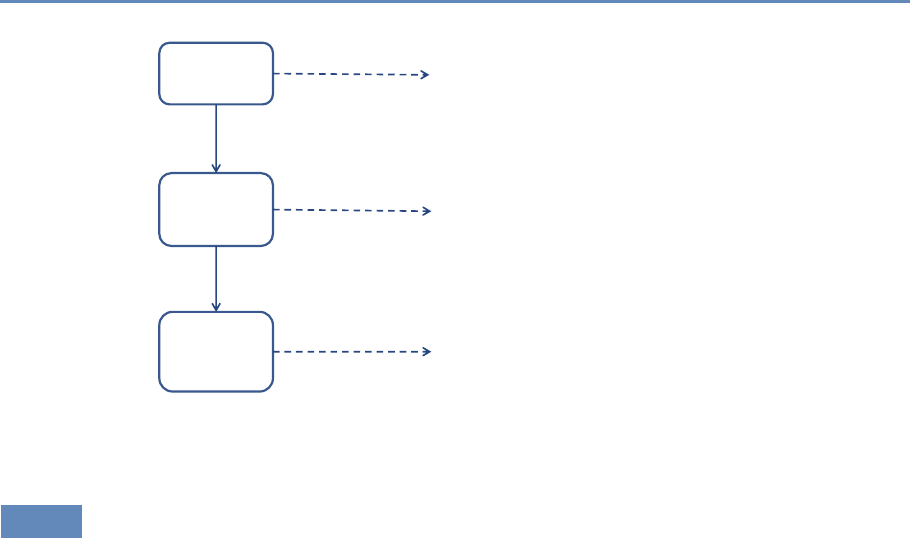

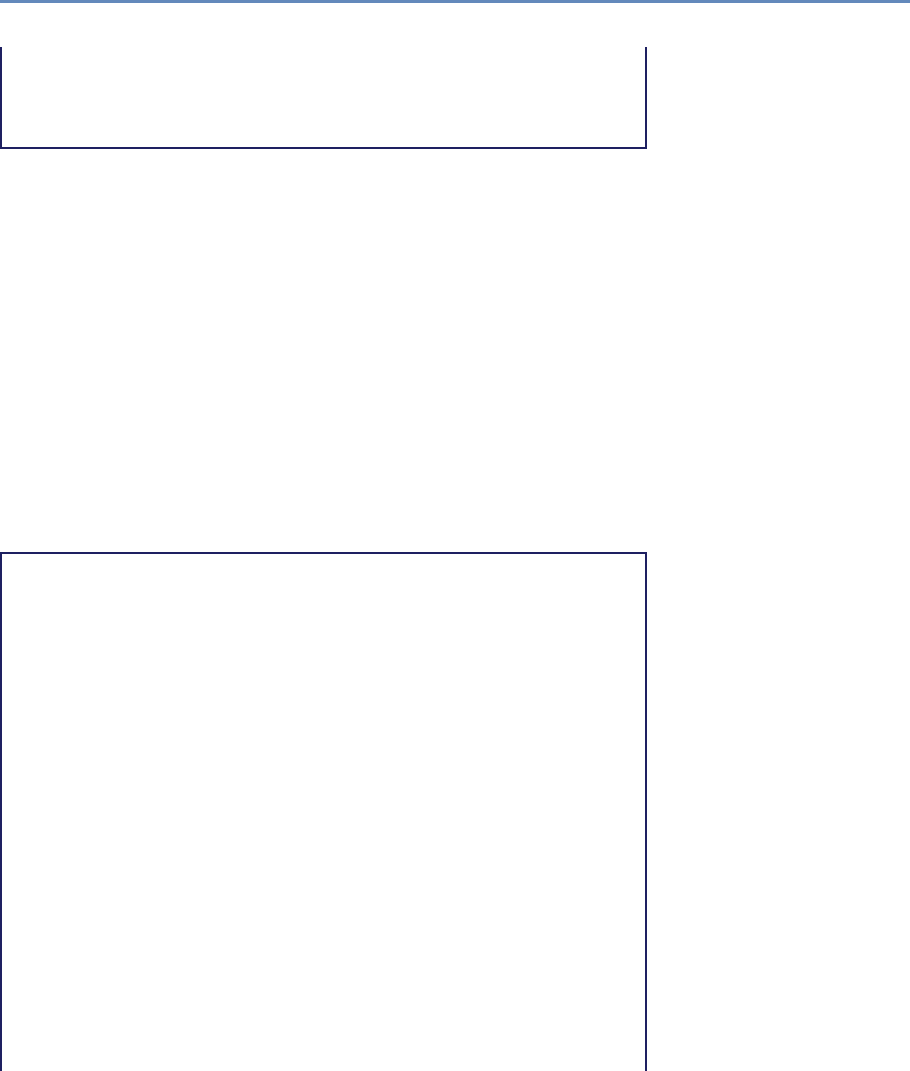

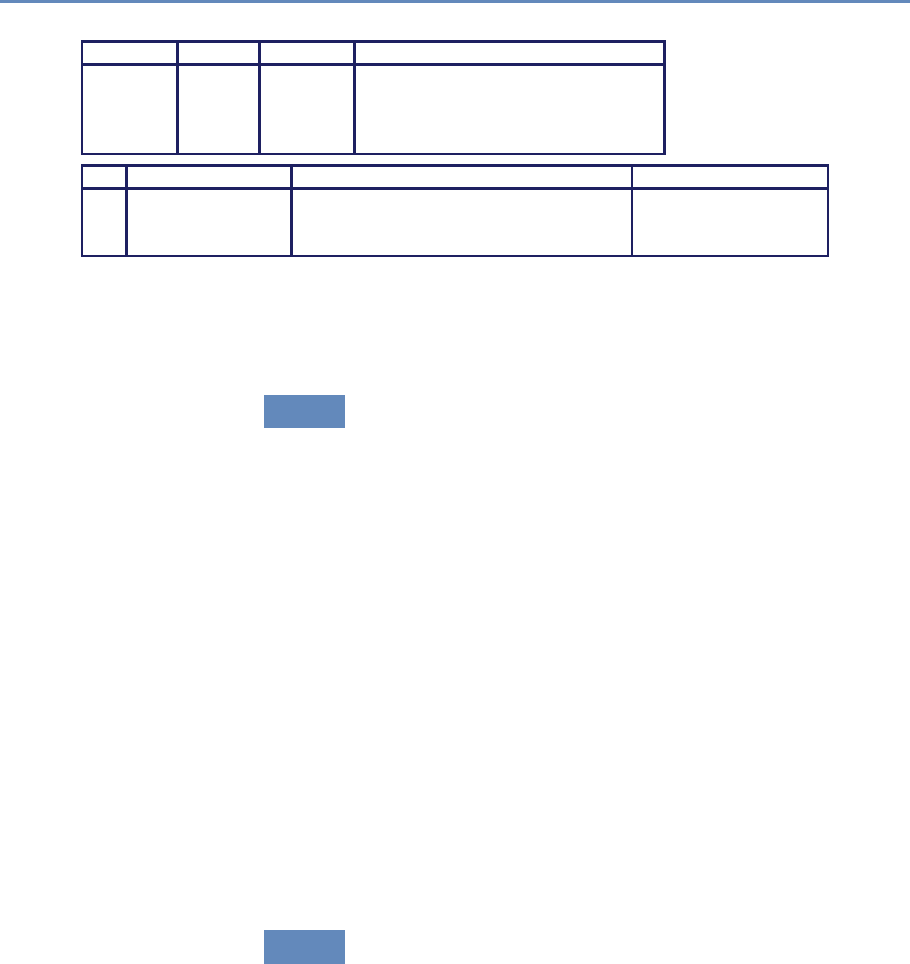

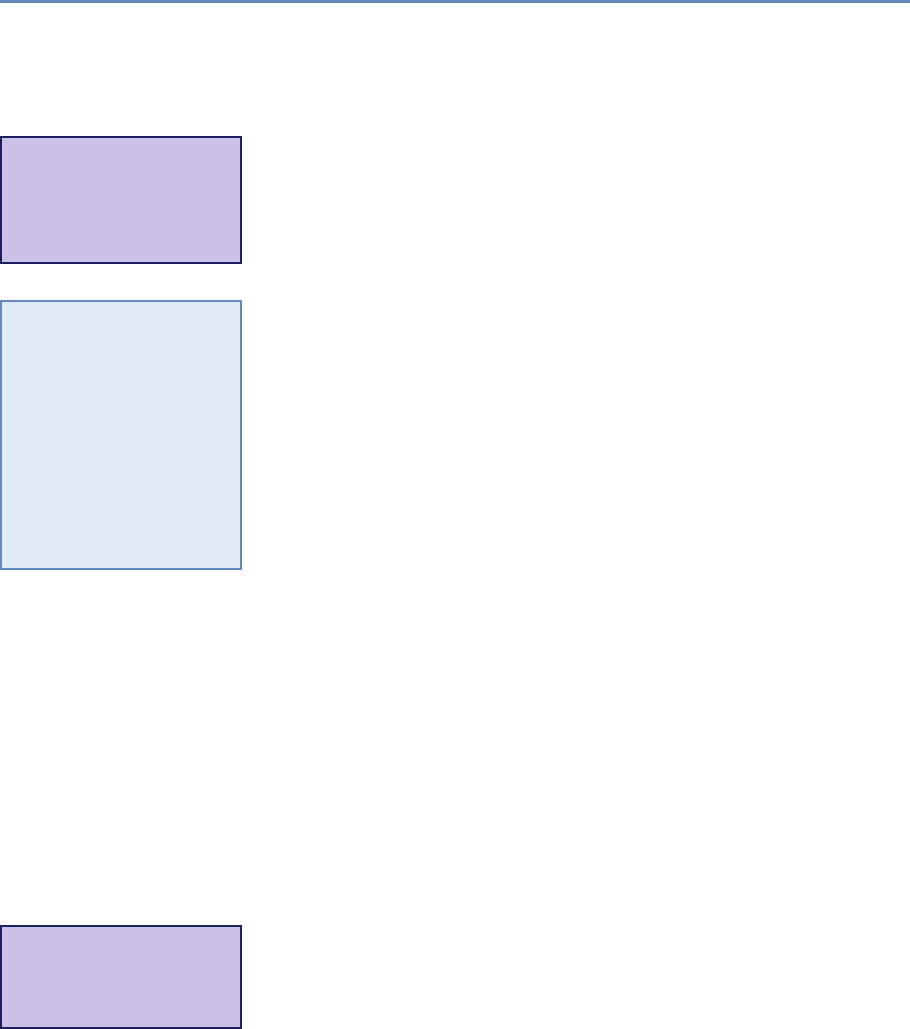

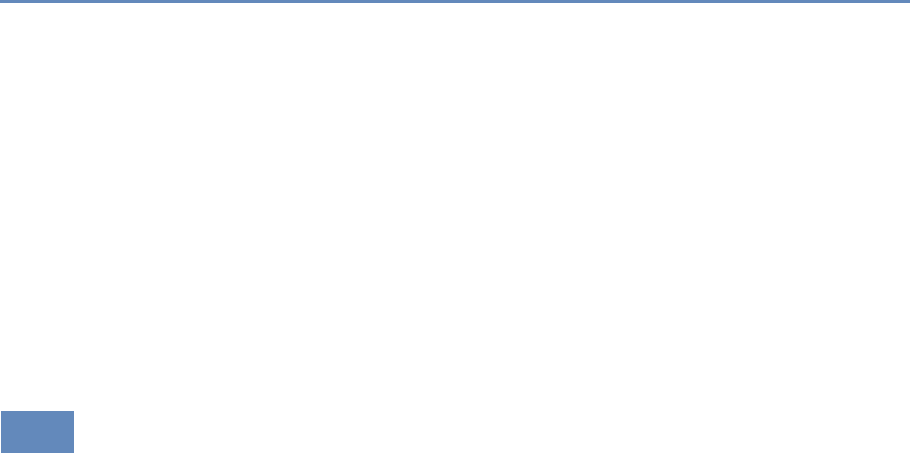

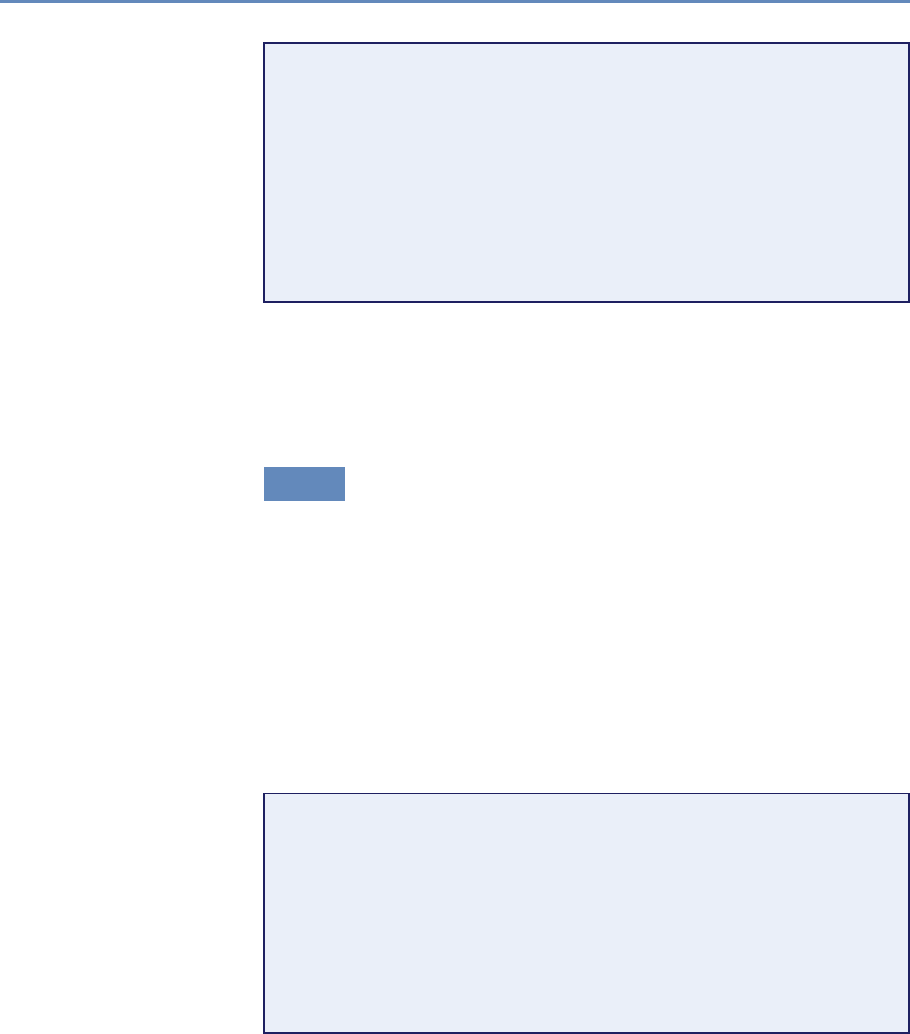

1.7.2 Part II: Modeling and analysis

The three chapters in Part II (see Figure 1.4) introduce three of the

most important tools that can be used by social scientists to do new

and exciting research: machine learning, text analysis, and social

network analysis.

Chapter 6 introduces machine learning methods. It shows the

power of machine learning in a variety of different contexts, par-

ticularly focusing on clustering and classification. You will get an

overview of basic approaches and how those approaches are applied.

The chapter builds from a conceptual framework and then shows

you how the different concepts are translated into code. There is

a particular focus on random forests and support vector machine

(SVM) approaches.

Chapter 7 describes how social scientists can make use of one of

the most exciting advances in big data—text analysis. Vast amounts

of data that are stored in documents can now be analyzed and

searched so that different types of information can be retrieved.

Documents (and the underlying activities of the entities that gener-

ated the documents) can be categorized into topics or fields as well

as summarized. In addition, machine translation can be used to

compare documents in different languages.

16 1. Introduction

Social scientists are typically interested in describing the activi-

ties of individuals and organizations (such as households and firms)

in a variety of economic and social contexts. The frames within

which data are collected have typically been generated from tax or

other programmatic sources. The new types of data permit new

units of analysis—particularly network analysis—largely enabled by

advances in mathematical graph theory. Thus, Chapter 8 describes

how social scientists can use network theory to generate measurable

representations of patterns of relationships connecting entities. As

the author points out, the value of the new framework is not only in

constructing different right-hand-side variables but also in study-

ing an entirely new unit of analysis that lies somewhere between

the largely atomistic actors that occupy the markets of neo-classical

theory and the tightly managed hierarchies that are the traditional

object of inquiry of sociologists and organizational theorists.

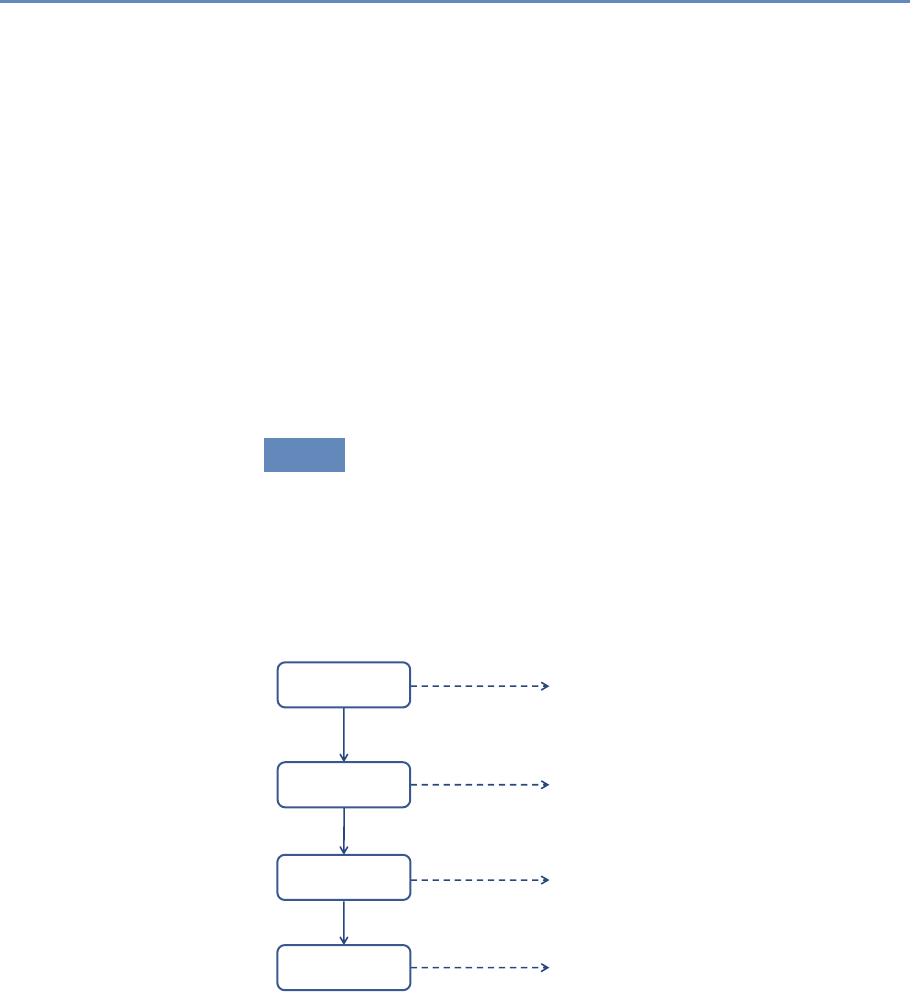

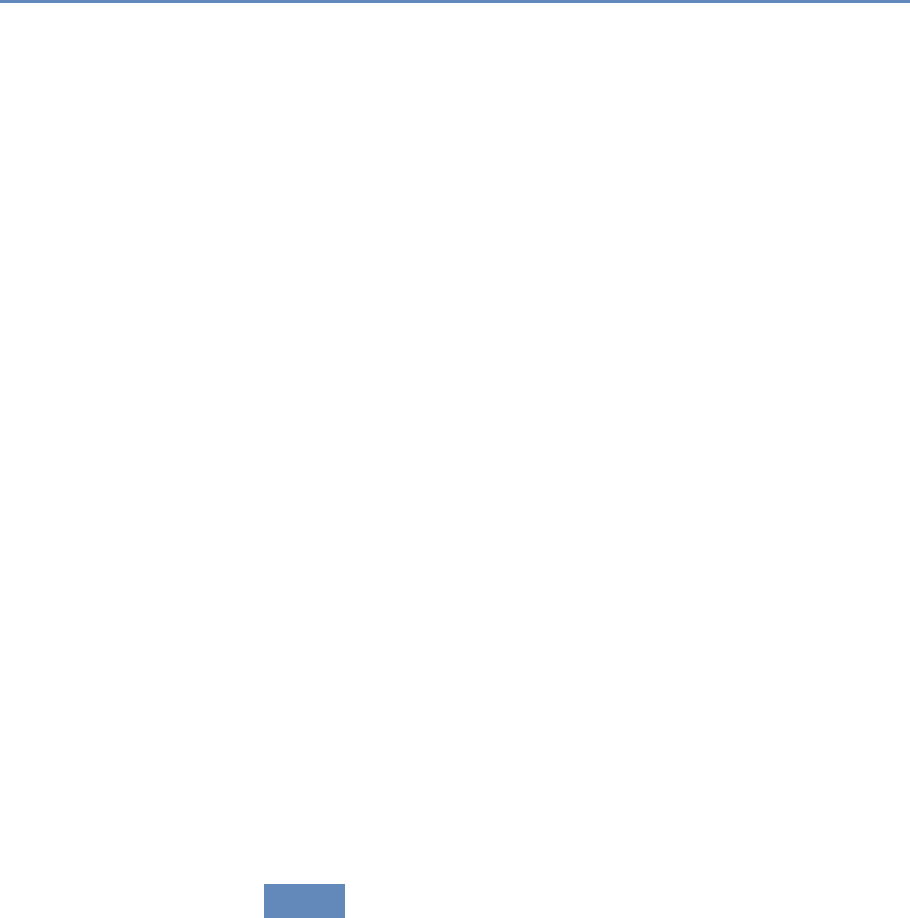

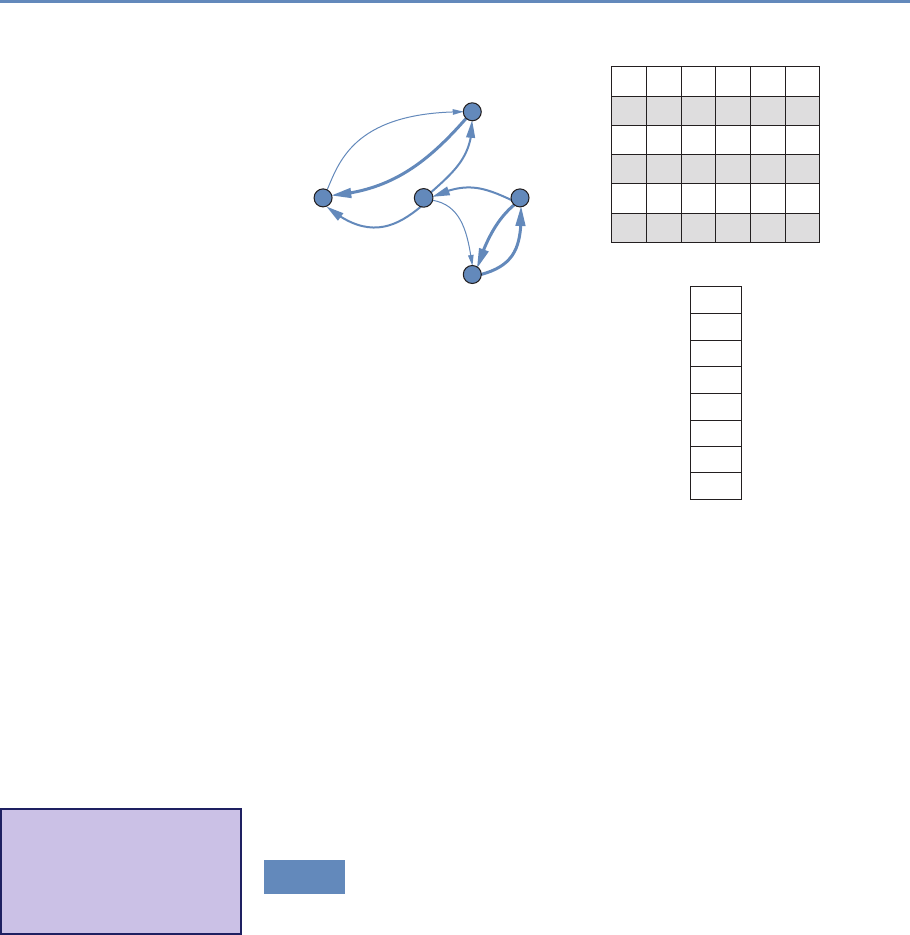

1.7.3 Part III: Inference and ethics

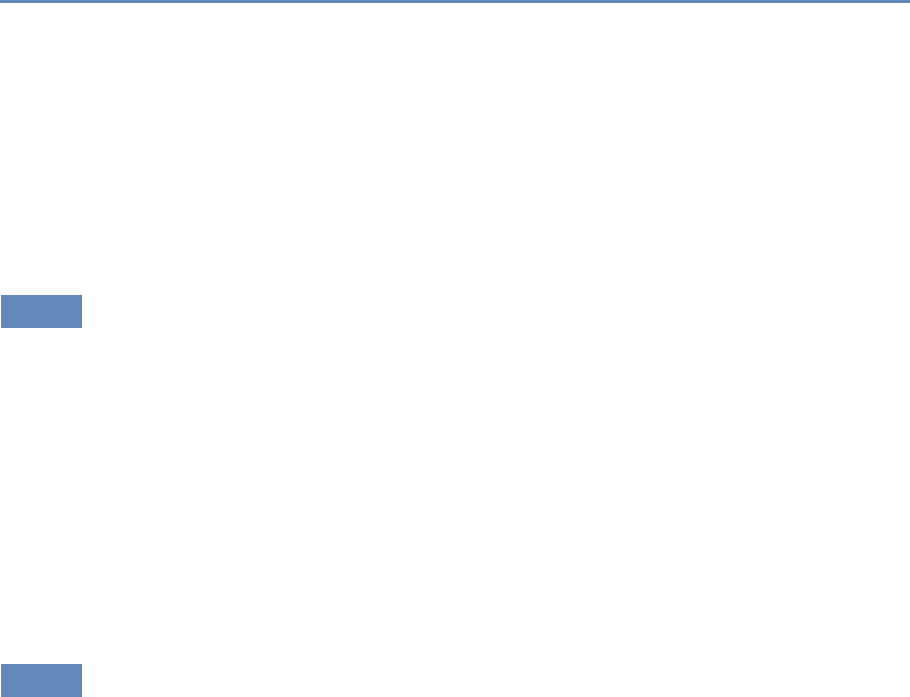

The four chapters in Part III (see Figure 1.5) cover three advanced

topics relating to data inference and ethics—information visualiza-

tion, errors and inference, and privacy and confidentiality—and in-

troduce the workbooks that provide access to the practical exercises

associated with the text.

Visualization

Inference

Privacy and

Condentiality

Chapter 9: Making sense of the data

Chapter 10: Drawing statistically valid conclusions

Chapter 11: Handling data appropriately

Chapter 12: Applying new models and tools

Workbooks

Figure 1.5. The four chapters in Part III focus on inference and ethics

1.8. Resources 17

Chapter 9 introduces information visualization methods and de-

scribes how you can use those methods to explore data and com-

municate results so that data can be turned into interpretable, ac-

tionable information. There are many ways of presenting statis-

tical information that convey content in a rigorous manner. The

goal of this chapter is to explore different approaches and exam-

ine the information content and analytical validity of the different

approaches. It provides an overview of effective visualizations.

Chapter 10 deals with inference and the errors associated with

big data. Social scientists know only too well the cost associated

with bad data—we highlighted the classic Literary Digest example

in the introduction to this chapter, as well as the more recent Google

Flu Trends. Although the consequences are well understood, the

new types of data are so large and complex that their properties

often cannot be studied in traditional ways. In addition, the data

generating function is such that the data are often selective, in-

complete, and erroneous. Without proper data hygiene, errors can

quickly compound. This chapter provides a systematic way to think

about the error framework in a big data setting.

Chapter 11 addresses the issue that sits at the core of any study

of human beings—privacy and confidentiality. In a new field, like the

one covered in this book, it is critical that many researchers have

access to the data so that work can be replicated and built on—that

there be a scientific basis to data science. Yet the rules that social

scientists have traditionally used for survey data, namely anonymity

and informed consent, no longer apply when the data are collected

in the wild. This concluding chapter identifies the issues that must

be addressed for responsible and ethical research to take place.

Finally, Chapter 12 provides an overview of the practical work

that accompanies each chapter—the workbooks that are designed,

using Jupyter notebooks, to enable students and interested prac- ◮See jupyter.org.

titioners to apply the new techniques and approaches in selected

chapters. We hope you have a lot of fun with them.

1.8 Resources

For more information on the science of science policy, see Husbands

et al.’s book for a full discussion of many issues [175] and the online

resources at the eponymous website [352].

This book is above all a practical introduction to the methods and

tools that the social scientist can use to make sense of big data,

and thus programming resources are also important. We make

18 1. Introduction

extensive use of the Python programming language and the MySQL

database management system in both the book and its supporting

workbooks. We recommend that any social scientist who aspires

to work with large data sets become proficient in the use of these

two systems, and also one more, GitHub. All three, fortunately, are

quite accessible and are supported by excellent online resources.

Time spent mastering them will be repaid many times over in more

productive research.

For Python, Alex Bell’s Python for Economists (available online

◮Read this! http://bit.ly/

1VgytVV [31]) provides a wonderful 30-page introduction to the use of Python

in the social sciences, complete with XKCD cartoons. Economists

Tom Sargent and John Stachurski provide a very useful set of lec-

tures and examples at http://quant-econ.net/. For more detail, we

recommend Charles Severance’s Python for Informatics: Exploring

Information [338], which not only covers basic Python but also pro-

vides material relevant to web data (the subject of Chapter 2) and

MySQL (the subject of Chapter 4). This book is also freely available

online and is supported by excellent online lectures and exercises.

For MySQL, Chapter 4 provides introductory material and point-

ers to additional resources, so we will not say more here.

We also recommend that you master GitHub. A version control

system is a tool for keeping track of changes that have been made

to a document over time. GitHub is a hosting service for projects

that use the Git version control system. As Strasser explains [363],

Git/GitHub makes it straightforward for researchers to create digi-

tal lab notebooks that record the data files, programs, papers, and

other resources associated with a project, with automatic tracking

of the changes that are made to those resources over time. GitHub

also makes it easy for collaborators to work together on a project,

whether a program or a paper: changes made by each contribu-

tor are recorded and can easily be reconciled. For example, we

used GitHub to create this book, with authors and editors check-

ing in changes and comments at different times and from many time

zones. We also use GitHub to provide access to the supporting work-

books. Ram [314] provides a nice description of how Git/GitHub can

be used to promote reproducibility and transparency in research.

One more resource that is outside the scope of this book but that

you may well want to master is the cloud [21,236]. It used to be that

when your data and computations became too large to analyze on

your laptop, you were out of luck unless your employer (or a friend)

had a larger computer. With the emergence of cloud storage and

computing services from the likes of Amazon Web Services, Google,

and Microsoft, powerful computers are available to anyone with a

Working with Web Data and APIs

Chapter 2

Cameron Neylon

This chapter will show you how to extract information from social

media about the transmission of knowledge. The particular appli-

cation will be to develop links to authors’ articles on Twitter using

PLOS articles and to pull information using an API. You will get link

data from bookmarking services, citations from Crossref, links from

Facebook, and information from news coverage. The examples that

will be used are from Twitter. In keeping with the social science

grounding that is a core feature of the book, it will discuss what can

be captured, what is potentially reliable, and how to manage data

quality issues.

2.1 Introduction

A tremendous lure of the Internet is the availability of vast amounts

of data on businesses, people, and their activity on social media.

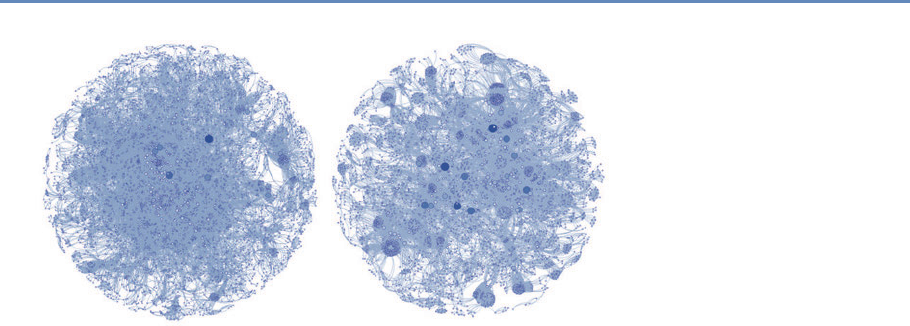

But how can we capture the information and make use of it as we