(Studies In Big Data 26) Srinivasan S. (ed.) Guide To Applications Springer (2018)

(Studies%20in%20Big%20Data%2026)%20Srinivasan%20S.%20(ed.)-Guide%20to%20Big%20Data%20Applications-Springer%20(2018)

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 567 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Foreword

- Preface

- Acknowledgements

- Contents

- List of Reviewers

- Part I General

- 1 Strategic Applications of Big Data

- 1.1 Introduction

- 1.2 From Value Disciplines to Digital Disciplines

- 1.3 Information Excellence

- 1.4 Solution Leadership

- 1.4.1 Digital-Physical Mirroring

- 1.4.2 Real-Time Product/Service Optimization

- 1.4.3 Product/Service Usage Optimization

- 1.4.4 Predictive Analytics and Predictive Maintenance

- 1.4.5 Product-Service System Solutions

- 1.4.6 Long-Term Product Improvement

- 1.4.7 The Experience Economy

- 1.4.8 Experiences

- 1.4.9 Transformations

- 1.4.10 Customer-Centered Product and Service Data Integration

- 1.4.11 Beyond Business

- 1.5 Collective Intimacy

- 1.6 Accelerated Innovation

- 1.7 Integrated Disciplines

- 1.8 Conclusion

- References

- 2 Start with Privacy by Design in All Big Data Applications

- 3 Privacy Preserving Federated Big Data Analysis

- 4 Word Embedding for Understanding Natural Language:A Survey

- 1 Strategic Applications of Big Data

- Part II Applications in Science

- 5 Big Data Solutions to Interpreting Complex Systems in the Environment

- 6 High Performance Computing and Big Data

- 6.1 Introduction

- 6.2 High Performance in Action

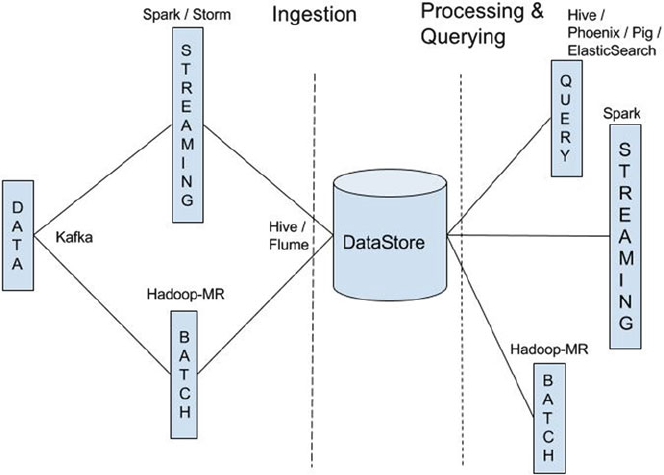

- 6.3 High-Performance and Big Data Deployment Types

- 6.4 Software and Hardware Considerations for Building Highly Performant Data Platforms

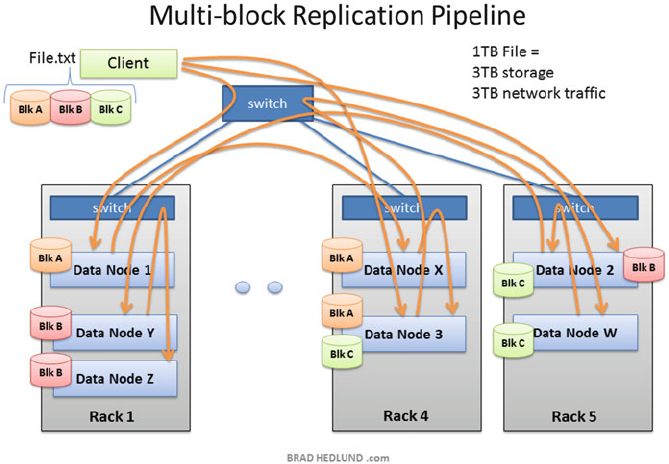

- 6.5 Designing Data Pipelines for High Performance

- 6.6 Conclusions

- References

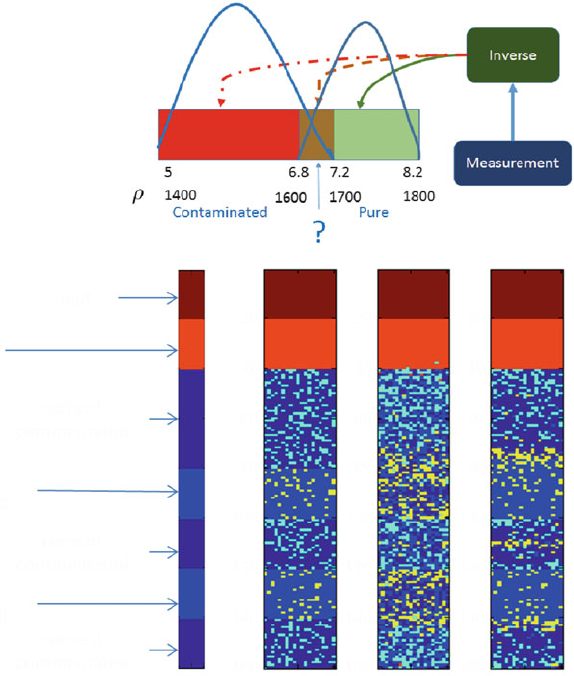

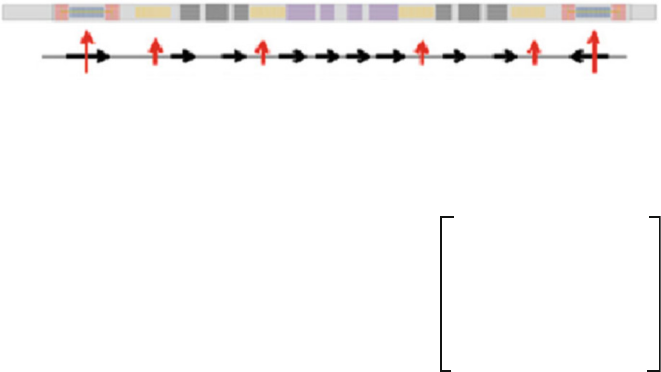

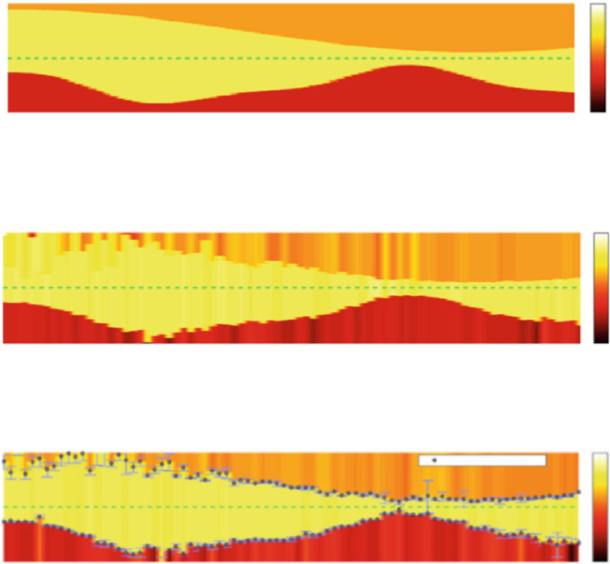

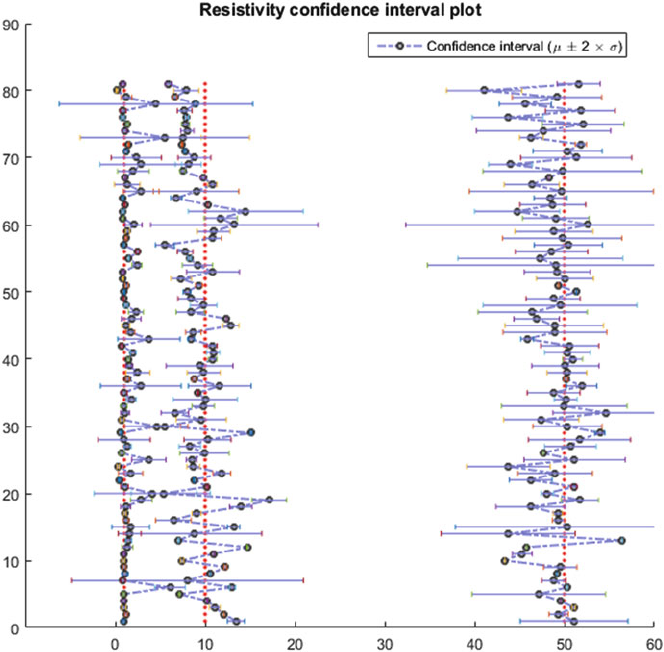

- 7 Managing Uncertainty in Large-Scale Inversions for the Oil and Gas Industry with Big Data

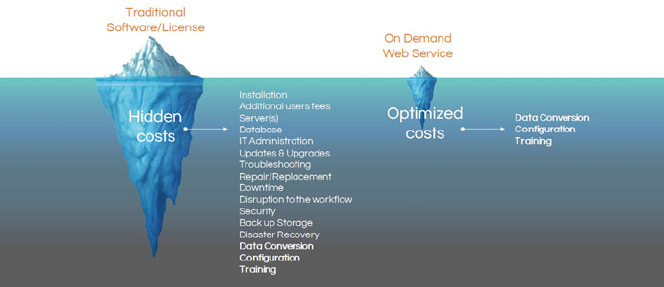

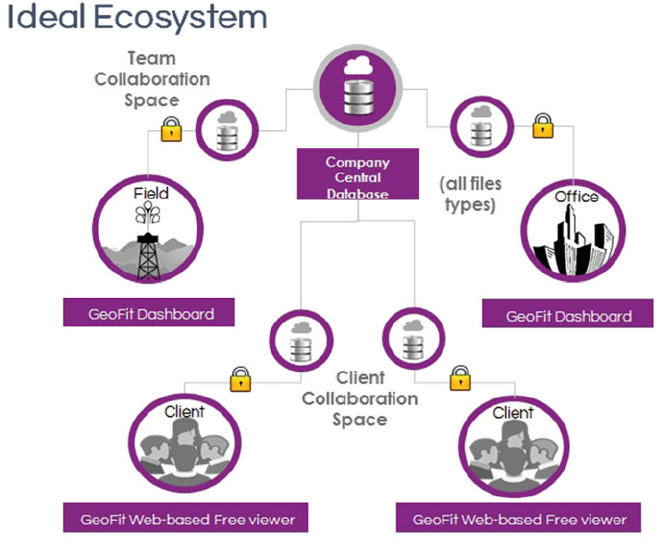

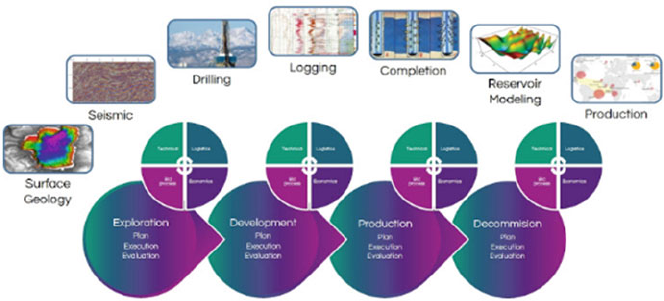

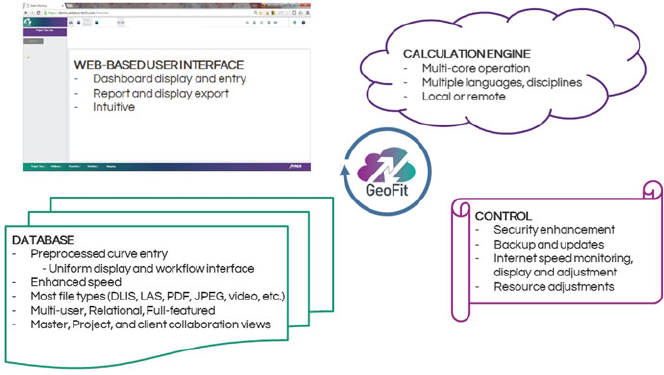

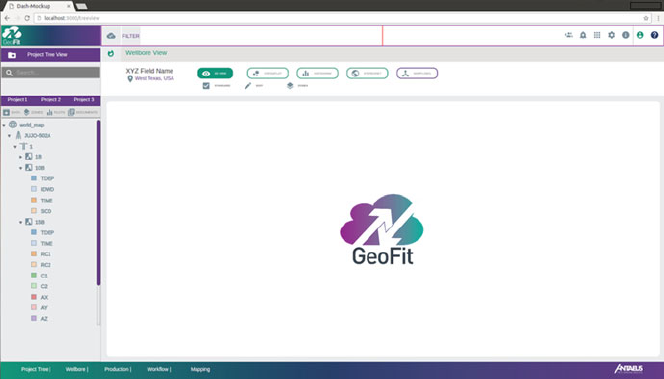

- 8 Big Data in Oil & Gas and Petrophysics

- 8.1 Introduction

- 8.2 The Value of Big Data for the Petroleum Industry

- 8.3 General Explanation of Terms

- 8.4 Steps to Big Data in the Oilfield

- 8.5 Future of Tools, Example

- 8.6 Next Step: Big Data Analytics in the Oil Industry

- 8.7 Big Data is the future of O&G

- 8.8 In Conclusion

- References

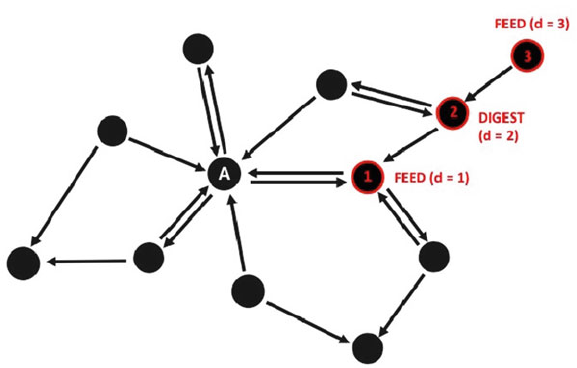

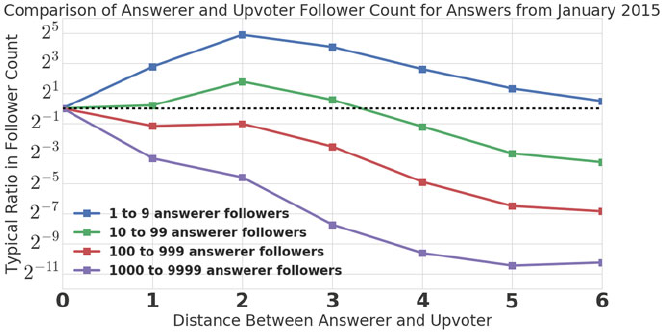

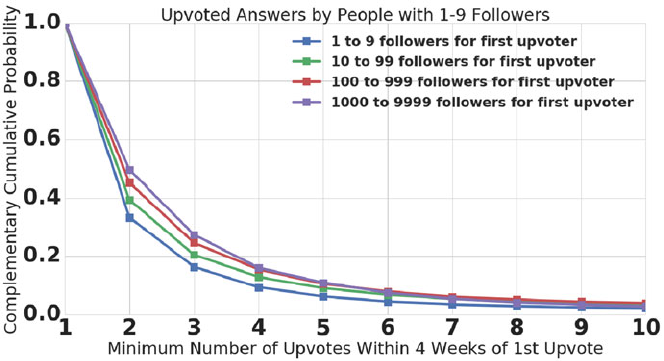

- 9 Friendship Paradoxes on Quora

- 9.1 Introduction

- 9.2 A Brief Review of the Statistics of Friendship Paradoxes: What are Strong Paradoxes, and Why Should We Measure Them?

- 9.2.1 Feld's Mathematical Argument

- 9.2.2 What Does Feld's Argument Imply?

- 9.2.3 Friendship Paradox Under Random Wiring

- 9.2.4 Beyond Random-Wiring Assumptions: Why Weak and Strong Friendship Paradoxes are Ubiquitous in Undirected Networks

- 9.2.5 Weak Generalized Paradoxes are Ubiquitous Too

- 9.2.6 Strong Degree-Based Paradoxes in Directed Networks and Strong Generalized Paradoxes are Nontrivial

- 9.3 Strong Paradoxes in the Quora Follow Network

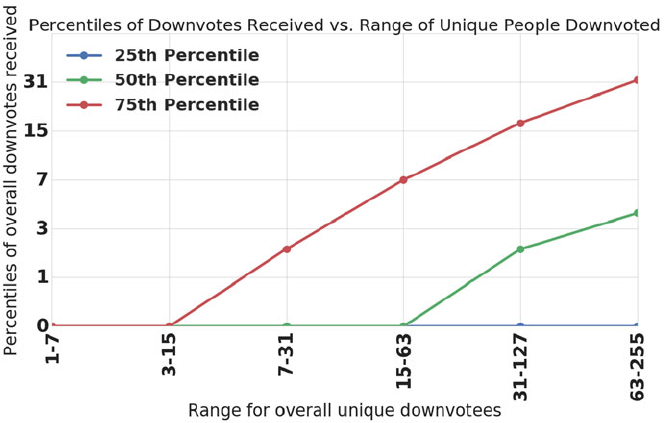

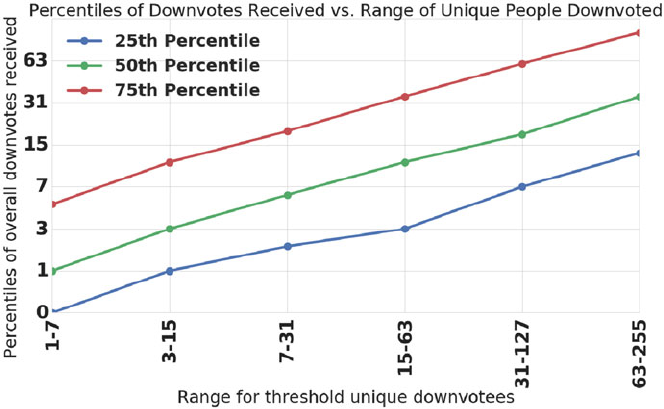

- 9.4 A Strong Paradox in Downvoting

- 9.4.1 What are Upvotes and Downvotes?

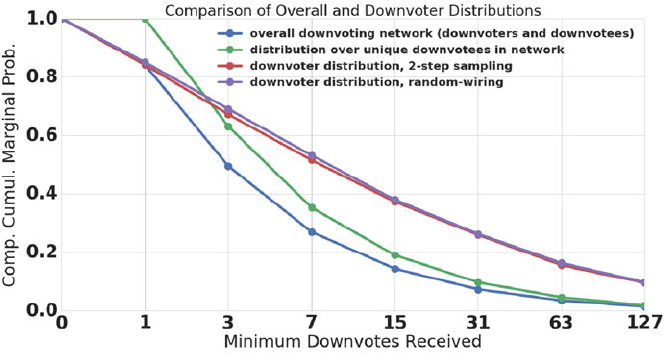

- 9.4.2 The Downvoting Network and the Core Questions

- 9.4.3 The Downvoting Paradox is Absent in the Full Downvoting Network

- 9.4.4 The Downvoting Paradox Occurs When The Downvotee and Downvoter are Active Contributors

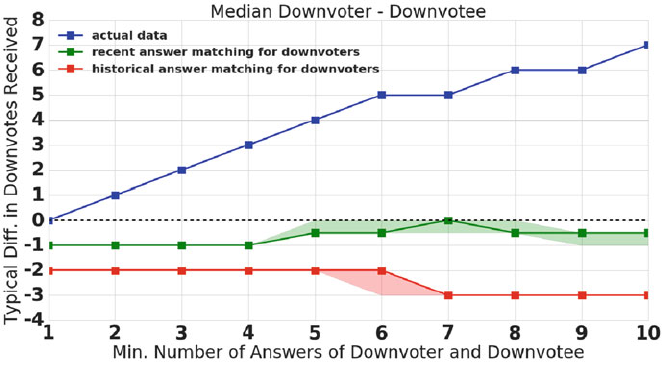

- 9.4.5 Does a ``Content-Contribution Paradox'' Explain the Downvoting Paradox?

- 9.4.6 Summary and Implications

- 9.5 A Strong Paradox in Upvoting

- 9.6 Conclusion

- References

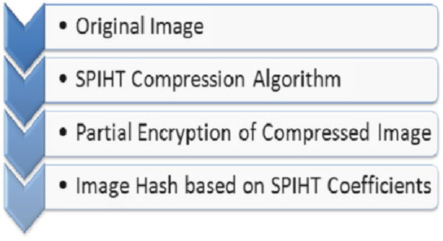

- 10 Deduplication Practices for Multimedia Data in the Cloud

- 10.1 Context and Motivation

- 10.2 Data Deduplication Technical Design Issues

- 10.3 Chapter Highlights

- 10.4 Secure Image Deduplication Through Image Compression

- 10.5 Background

- 10.6 Proposed Image Deduplication Scheme

- 10.7 Secure Video Deduplication Scheme in Cloud Storage Environment Using H.264 Compression

- 10.8 Background

- 10.9 Proposed Video Deduplication Scheme

- 10.10 Chapter Summary

- References

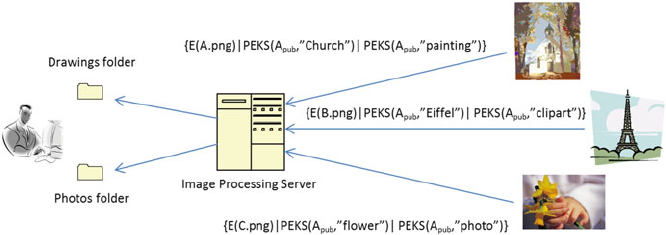

- 11 Privacy-Aware Search and Computation Over Encrypted Data Stores

- 11.1 Introduction

- 11.2 Searchable Encryption Models

- 11.3 Text

- 11.4 Range Queries

- 11.5 Media

- 11.6 Other Applications

- 11.7 Conclusions

- References

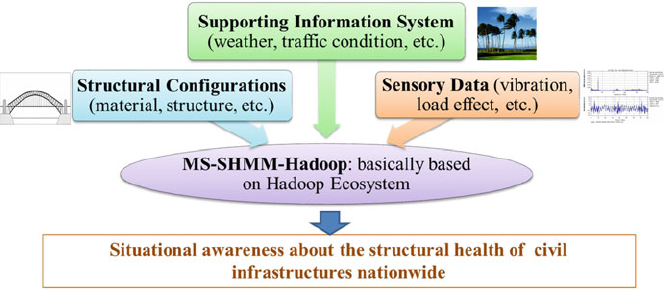

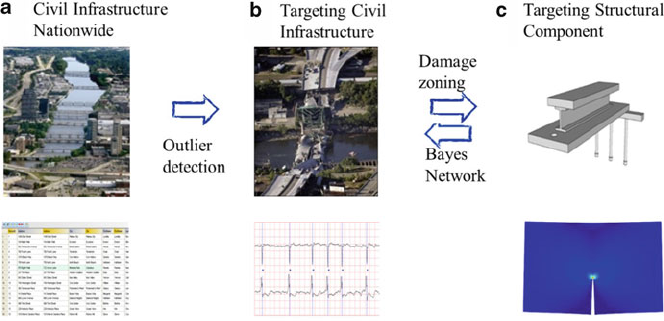

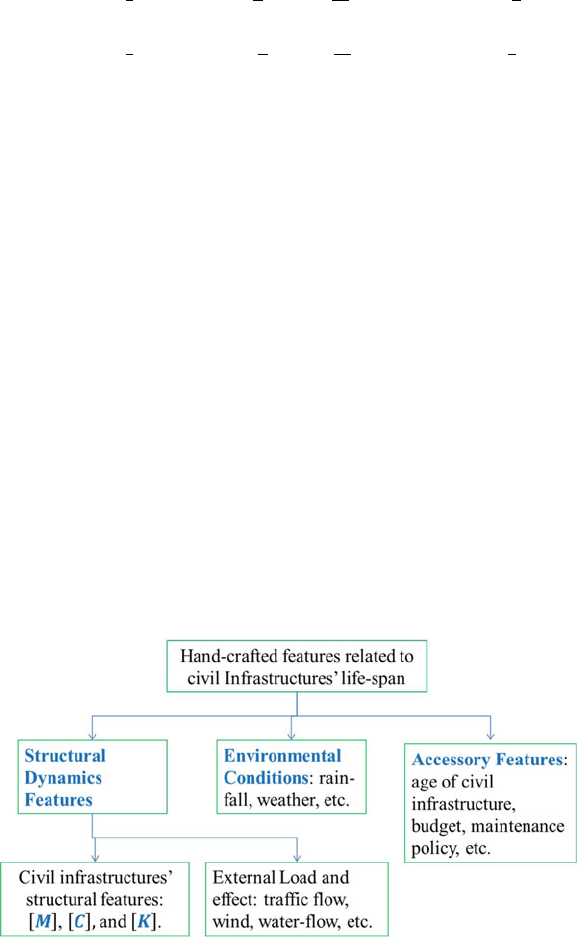

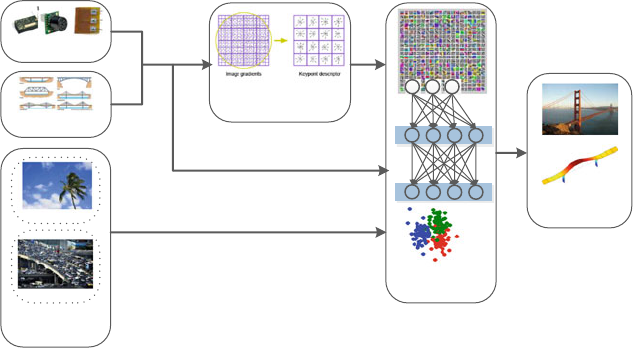

- 12 Civil Infrastructure Serviceability Evaluation Based on Big Data

- 12.1 Introduction

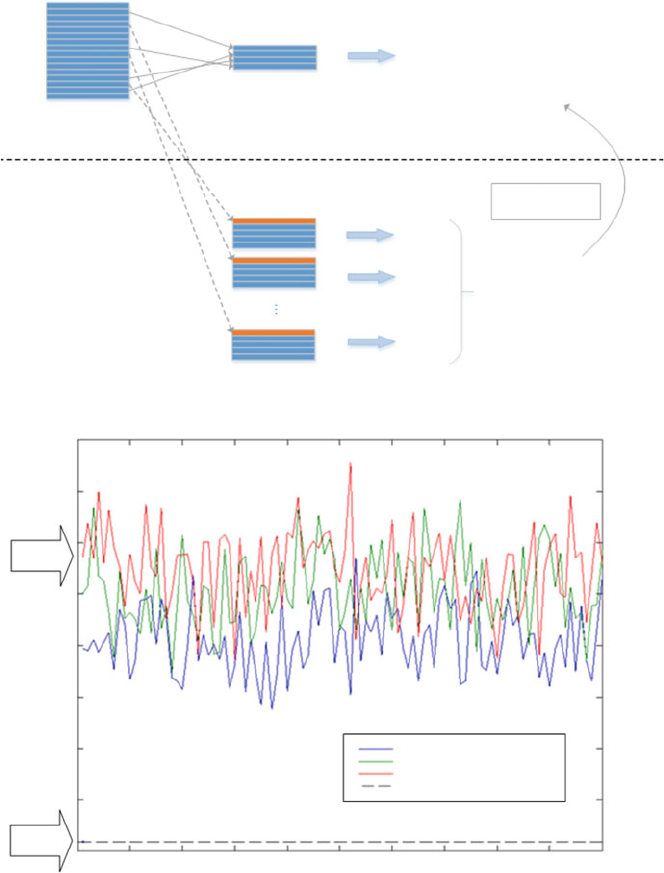

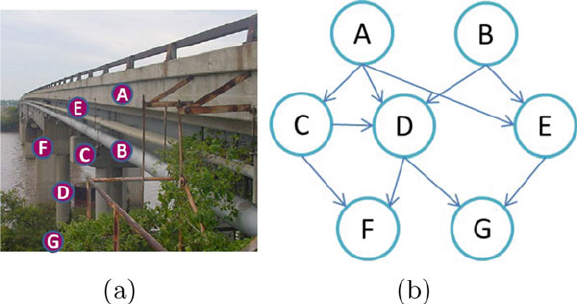

- 12.2 Implementation Framework About MS-SHM-Hadoop

- 12.3 Acquisition of Sensory Data and Integration of Structure-Related Data

- 12.4 Nationwide Civil Infrastructure Survey

- 12.5 Global Structural Integrity Analysis

- 12.6 Localized Critical Component Reliability Analysis

- 12.7 Civil Infrastructure's Reliability Analysis Based on Bayesian Network

- 12.8 Conclusion and Future Work

- References

- Part III Applications in Medicine

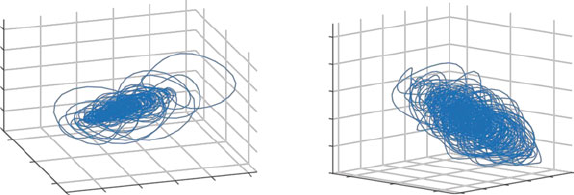

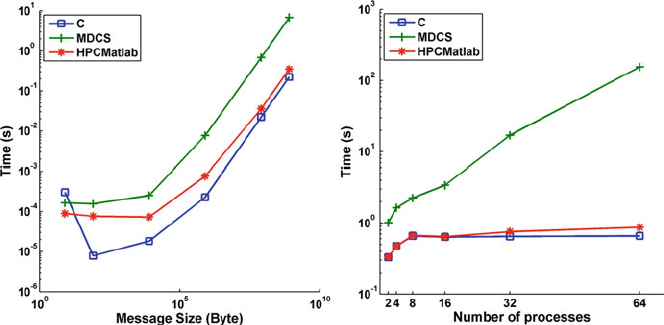

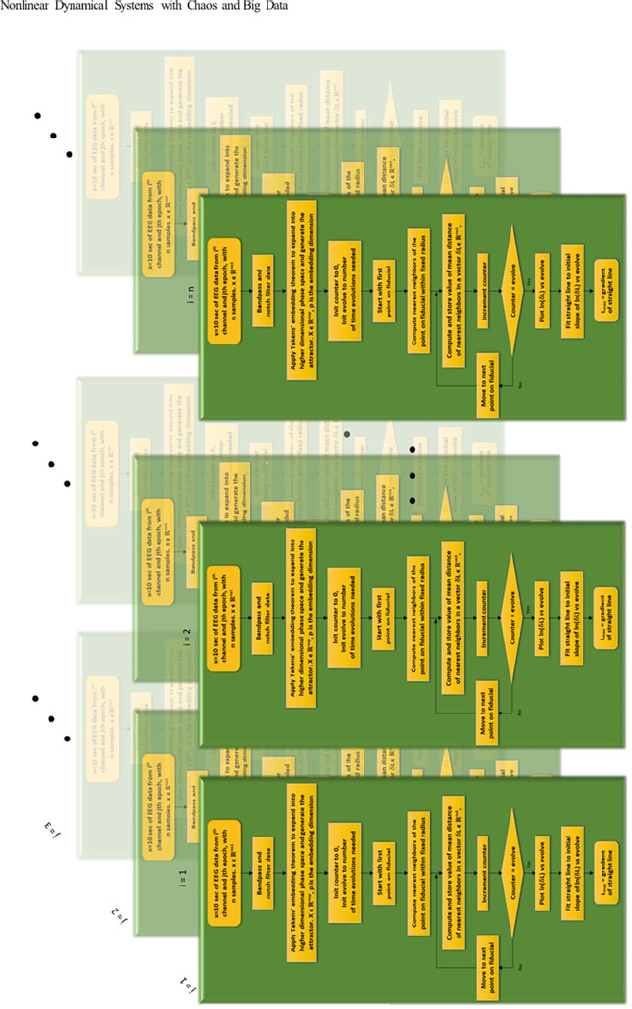

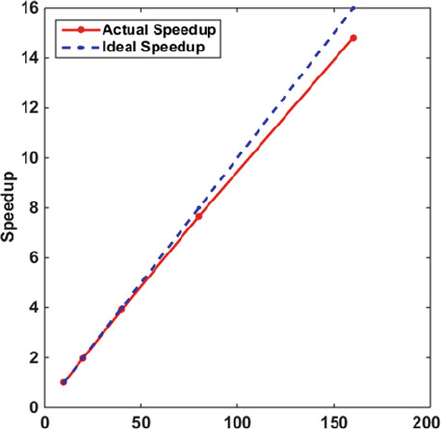

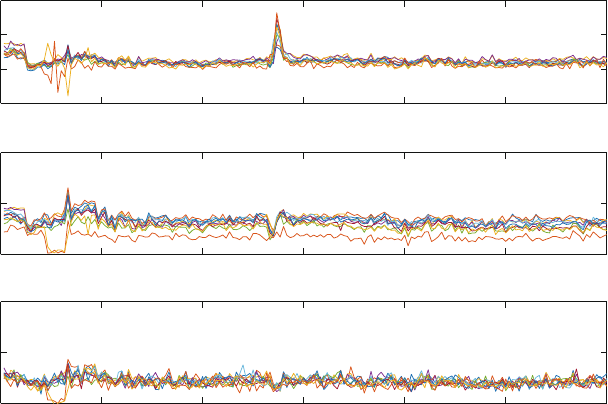

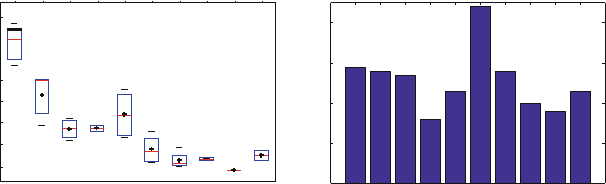

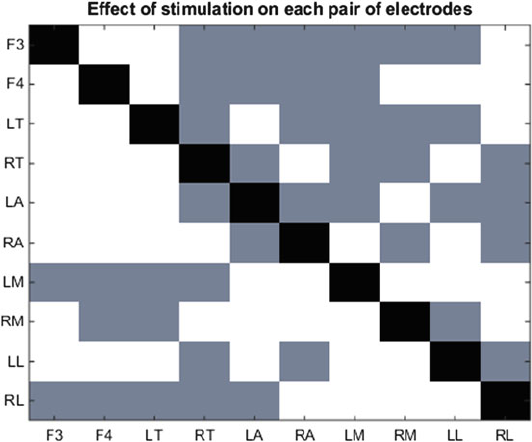

- 13 Nonlinear Dynamical Systems with Chaos and Big Data:A Case Study of Epileptic Seizure Prediction and Control

- 13.1 Introduction

- 13.2 Background

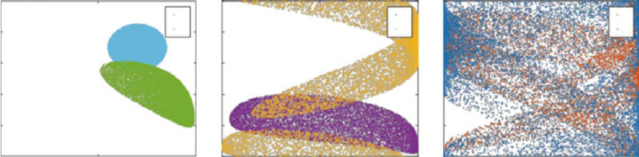

- 13.3 Nonlinear Dynamical Systems with Chaos

- 13.4 Lyapunov Exponents

- 13.5 Rapid Prototyping HPCmatlab Platform

- 13.6 Case Study: Epileptic Seizure Prediction and Control

- 13.7 Future Research Opportunities and Conclusions

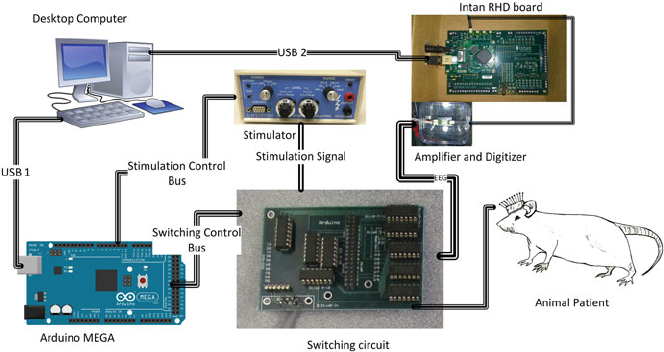

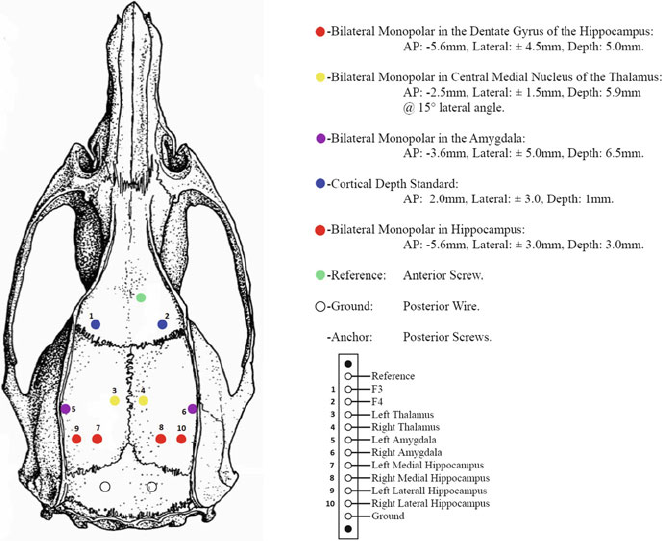

- Appendix 1: Electrical Stimulation and Experiment Setup

- Appendix 2: Preparation of Animals

- References

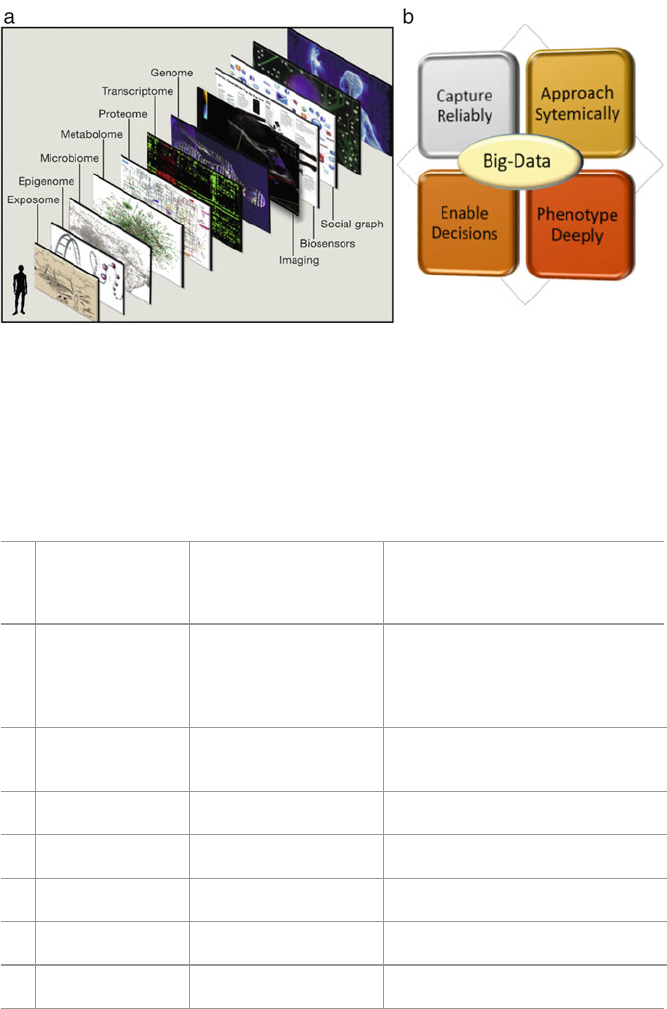

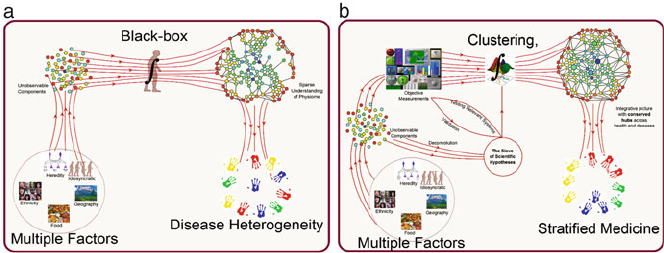

- 14 Big Data to Big Knowledge for Next Generation Medicine:A Data Science Roadmap

- 15 Time-Based Comorbidity in Patients Diagnosed with Tobacco Use Disorder

- 15.1 Introduction

- 15.2 Method

- 15.3 Results

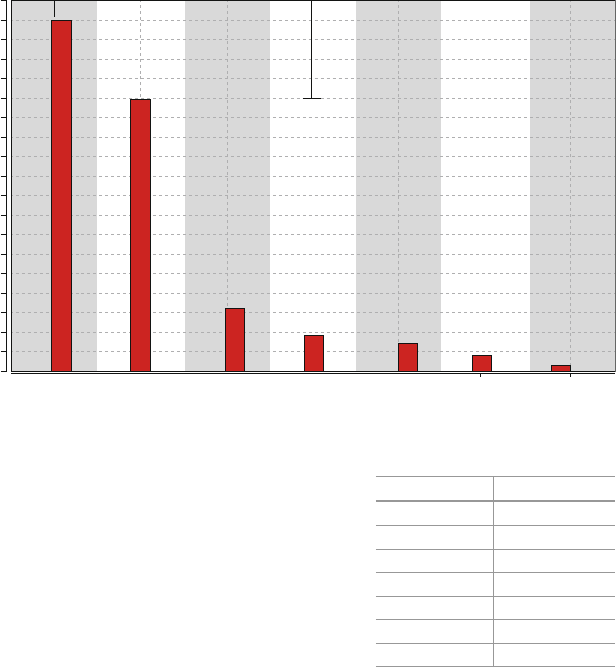

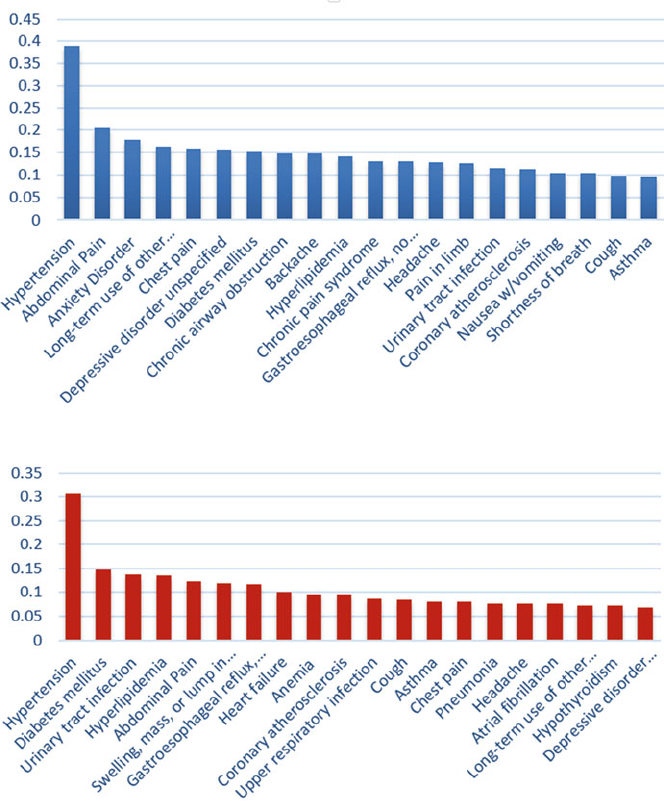

- 15.3.1 Top 20 Diseases in TUD and Non-TUD Patients

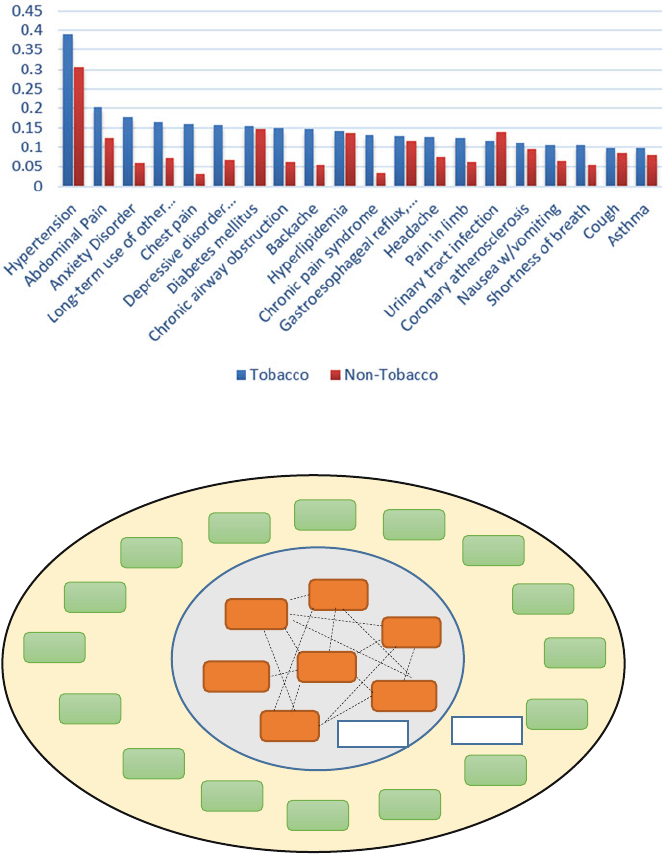

- 15.3.2 Top 20 Diseases in TUD Patients and Corresponding Prevalence in Non-TUD Patients

- 15.3.3 Top 15 Comorbidities in TUD Patients Across Two Hospital Visits (Second Iteration) and Corresponding Prevalence in Non-TUD Patients

- 15.3.4 Comorbidities in TUD Patients Across Three Hospital Visits (Third Iteration) and Comparison with Non-TUD Patients

- 15.4 Discussion and Concluding Remarks

- References

- 16 The Impact of Big Data on the Physician

- 16.1 Part 1: The Patient-Physician Relationship

- 16.1.1 Defining Quality Care

- 16.1.2 Choosing the Best Doctor

- 16.1.3 Choosing the Best Hospital

- 16.1.4 Sharing Information: Using Big Data to Expand the Patient History

- 16.1.5 What is mHealth?

- 16.1.6 mHealth from the Provider Side

- 16.1.7 mHealth from the Patient Side

- 16.1.8 Logistical Concerns

- 16.1.9 Accessibility

- 16.1.10 Privacy and Security

- 16.1.11 Regulation and Liability

- 16.1.12 Patient Education and Partnering with Patients

- 16.1.13 Developing Online Health Communities Through Social Media: Creating Data that Fuels Research

- 16.1.14 Translating Complex Medical Datainto Patient-Friendly Formats

- 16.1.15 Beyond the Package Insert: Iodine.com

- 16.1.16 Data Inspires the Advent of New Models of Medical Care Delivery

- 16.2 Part II: Physician Uses for Big Data in Clinical Care

- References

- 16.1 Part 1: The Patient-Physician Relationship

- 13 Nonlinear Dynamical Systems with Chaos and Big Data:A Case Study of Epileptic Seizure Prediction and Control

- Part IV Applications in Business

- 17 The Potential of Big Data in Banking

- 18 Marketing Applications Using Big Data

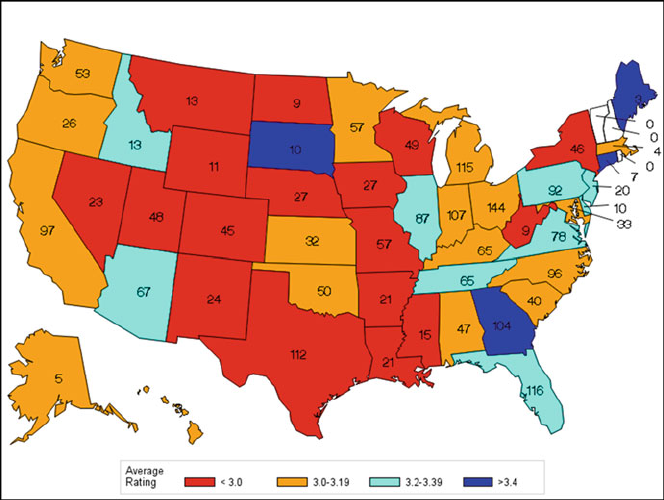

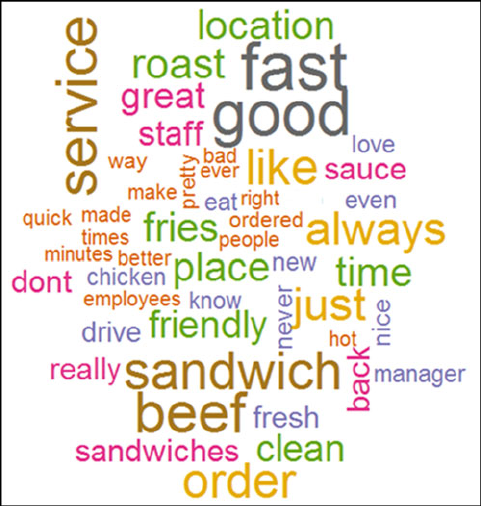

- 19 Does Yelp Matter? Analyzing (And Guide to Using) Ratings for a Quick Serve Restaurant Chain

- Author Biographies

- Index

Studies in Big Data 26

S.Srinivasan Editor

Guide to

Big Data

Applications

About this Series

The series “Studies in Big Data” (SBD) publishes new developments and advances

in the various areas of Big Data – quickly and with a high quality. The intent

is to cover the theory, research, development, and applications of Big Data, as

embedded in the fields of engineering, computer science, physics, economics and

life sciences. The books of the series refer to the analysis and understanding of

large, complex, and/or distributed data sets generated from recent digital sources

coming from sensors or other physical instruments as well as simulations, crowd

sourcing, social networks or other internet transactions, such as emails or video

click streams and other. The series contains monographs, lecture notes and edited

volumes in Big Data spanning the areas of computational intelligence including

neural networks, evolutionary computation, soft computing, fuzzy systems, as well

as artificial intelligence, data mining, modern statistics and Operations research, as

well as self-organizing systems. Of particular value to both the contributors and

the readership are the short publication timeframe and the world-wide distribution,

which enable both wide and rapid dissemination of research output.

More information about this series at http://www.springer.com/series/11970

S. Srinivasan

Editor

Guide to Big Data

Applications

123

Editor

S. Srinivasan

Jesse H. Jones School of Business

Texas Southern University

Houston, TX, USA

ISSN 2197-6503 ISSN 2197-6511 (electronic)

Studies in Big Data

ISBN 978-3-319-53816-7 ISBN 978-3-319-53817-4 (eBook)

DOI 10.1007/978-3-319-53817-4

Library of Congress Control Number: 2017936371

© Springer International Publishing AG 2018

This work is subject to copyright. All rights are reserved by the Publisher, whether the whole or part of

the material is concerned, specifically the rights of translation, reprinting, reuse of illustrations, recitation,

broadcasting, reproduction on microfilms or in any other physical way, and transmission or information

storage and retrieval, electronic adaptation, computer software, or by similar or dissimilar methodology

now known or hereafter developed.

The use of general descriptive names, registered names, trademarks, service marks, etc. in this publication

does not imply, even in the absence of a specific statement, that such names are exempt from the relevant

protective laws and regulations and therefore free for general use.

The publisher, the authors and the editors are safe to assume that the advice and information in this book

are believed to be true and accurate at the date of publication. Neither the publisher nor the authors or

the editors give a warranty, express or implied, with respect to the material contained herein or for any

errors or omissions that may have been made. The publisher remains neutral with regard to jurisdictional

claims in published maps and institutional affiliations.

Printed on acid-free paper

This Springer imprint is published by Springer Nature

The registered company is Springer International Publishing AG

The registered company address is: Gewerbestrasse 11, 6330 Cham, Switzerland

To my wife Lakshmi and grandson Sahaas

Foreword

It gives me great pleasure to write this Foreword for this timely publication on the

topic of the ever-growing list of Big Data applications. The potential for leveraging

existing data from multiple sources has been articulated over and over, in an

almost infinite landscape, yet it is important to remember that in doing so, domain

knowledge is key to success. Naïve attempts to process data are bound to lead to

errors such as accidentally regressing on noncausal variables. As Michael Jordan

at Berkeley has pointed out, in Big Data applications the number of combinations

of the features grows exponentially with the number of features, and so, for any

particular database, you are likely to find some combination of columns that will

predict perfectly any outcome, just by chance alone. It is therefore important that

we do not process data in a hypothesis-free manner and skip sanity checks on our

data.

In this collection titled “Guide to Big Data Applications,” the editor has

assembled a set of applications in science, medicine, and business where the authors

have attempted to do just this—apply Big Data techniques together with a deep

understanding of the source data. The applications covered give a flavor of the

benefits of Big Data in many disciplines. This book has 19 chapters broadly divided

into four parts. In Part I, there are four chapters that cover the basics of Big

Data, aspects of privacy, and how one could use Big Data in natural language

processing (a particular concern for privacy). Part II covers eight chapters that

vii

viii Foreword

look at various applications of Big Data in environmental science, oil and gas, and

civil infrastructure, covering topics such as deduplication, encrypted search, and the

friendship paradox.

Part III covers Big Data applications in medicine, covering topics ranging

from “The Impact of Big Data on the Physician,” written from a purely clinical

perspective, to the often discussed deep dives on electronic medical records.

Perhaps most exciting in terms of future landscaping is the application of Big Data

application in healthcare from a developing country perspective. This is one of the

most promising growth areas in healthcare, due to the current paucity of current

services and the explosion of mobile phone usage. The tabula rasa that exists in

many countries holds the potential to leapfrog many of the mistakes we have made

in the west with stagnant silos of information, arbitrary barriers to entry, and the

lack of any standardized schema or nondegenerate ontologies.

In Part IV, the book covers Big Data applications in business, which is perhaps

the unifying subject here, given that none of the above application areas are likely

to succeed without a good business model. The potential to leverage Big Data

approaches in business is enormous, from banking practices to targeted advertising.

The need for innovation in this space is as important as the underlying technologies

themselves. As Clayton Christensen points out in The Innovator’s Prescription,

three revolutions are needed for a successful disruptive innovation:

1. A technology enabler which “routinizes” previously complicated task

2. A business model innovation which is affordable and convenient

3. A value network whereby companies with disruptive mutually reinforcing

economic models sustain each other in a strong ecosystem

We see this happening with Big Data almost every week, and the future is

exciting.

In this book, the reader will encounter inspiration in each of the above topic

areas and be able to acquire insights into applications that provide the flavor of this

fast-growing and dynamic field.

Atlanta, GA, USA Gari Clifford

December 10, 2016

Preface

Big Data applications are growing very rapidly around the globe. This new approach

to decision making takes into account data gathered from multiple sources. Here my

goal is to show how these diverse sources of data are useful in arriving at actionable

information. In this collection of articles the publisher and I are trying to bring

in one place several diverse applications of Big Data. The goal is for users to see

how a Big Data application in another field could be replicated in their discipline.

With this in mind I have assembled in the “Guide to Big Data Applications” a

collection of 19 chapters written by academics and industry practitioners globally.

These chapters reflect what Big Data is, how privacy can be protected with Big

Data and some of the important applications of Big Data in science, medicine and

business. These applications are intended to be representative and not exhaustive.

For nearly two years I spoke with major researchers around the world and the

publisher. These discussions led to this project. The initial Call for Chapters was

sent to several hundred researchers globally via email. Approximately 40 proposals

were submitted. Out of these came commitments for completion in a timely manner

from 20 people. Most of these chapters are written by researchers while some are

written by industry practitioners. One of the submissions was not included as it

could not provide evidence of use of Big Data. This collection brings together in

one place several important applications of Big Data. All chapters were reviewed

using a double-blind process and comments provided to the authors. The chapters

included reflect the final versions of these chapters.

I have arranged the chapters in four parts. Part I includes four chapters that deal

with basic aspects of Big Data and how privacy is an integral component. In this

part I include an introductory chapter that lays the foundation for using Big Data

in a variety of applications. This is then followed with a chapter on the importance

of including privacy aspects at the design stage itself. This chapter by two leading

researchers in the field shows the importance of Big Data in dealing with privacy

issues and how they could be better addressed by incorporating privacy aspects at

the design stage itself. The team of researchers from a major research university in

the USA addresses the importance of federated Big Data. They are looking at the

use of distributed data in applications. This part is concluded with a chapter that

ix

xPreface

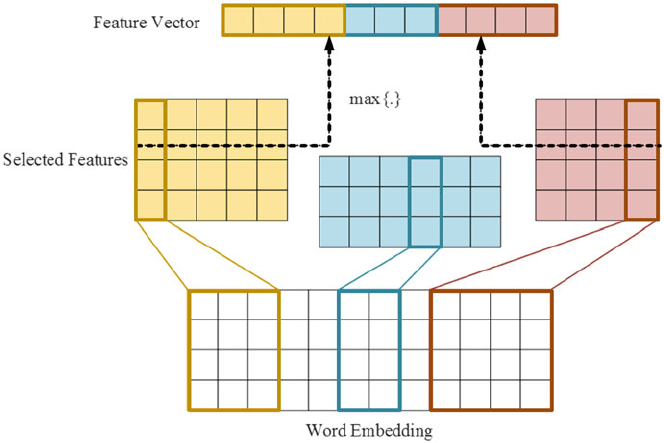

shows the importance of word embedding and natural language processing using

Big Data analysis.

In Part II, there are eight chapters on the applications of Big Data in science.

Science is an important area where decision making could be enhanced on the

way to approach a problem using data analysis. The applications selected here

deal with Environmental Science, High Performance Computing (HPC), friendship

paradox in noting which friend’s influence will be significant, significance of

using encrypted search with Big Data, importance of deduplication in Big Data

especially when data is collected from multiple sources, applications in Oil &

Gas and how decision making can be enhanced in identifying bridges that need

to be replaced as part of meeting safety requirements. All these application areas

selected for inclusion in this collection show the diversity of fields in which Big

Data is used today. The Environmental Science application shows how the data

published by the National Oceanic and Atmospheric Administration (NOAA) is

used to study the environment. Since such datasets are very large, specialized tools

are needed to benefit from them. In this chapter the authors show how Big Data

tools help in this effort. The team of industry practitioners discuss how there is

great similarity in the way HPC deals with low-latency, massively parallel systems

and distributed systems. These are all typical of how Big Data is used using tools

such as MapReduce, Hadoop and Spark. Quora is a leading provider of answers to

user queries and in this context one of their data scientists is addressing how the

Friendship paradox is playing a significant part in Quora answers. This is a classic

illustration of a Big Data application using social media.

Big Data applications in science exist in many branches and it is very heavily

used in the Oil and Gas industry. Two chapters that address the Oil and Gas

application are written by two sets of people with extensive industry experience.

Two specific chapters are devoted to how Big Data is used in deduplication practices

involving multimedia data in the cloud and how privacy-aware searches are done

over encrypted data. Today, people are very concerned about the security of data

stored with an application provider. Encryption is the preferred tool to protect such

data and so having an efficient way to search such encrypted data is important.

This chapter’s contribution in this regard will be of great benefit for many users.

We conclude Part II with a chapter that shows how Big Data is used in noting the

structural safety of nation’s bridges. This practical application shows how Big Data

is used in many different ways.

Part III considers applications in medicine. A group of expert doctors from

leading medical institutions in the Bay Area discuss how Big Data is used in the

practice of medicine. This is one area where many more applications abound and the

interested reader is encouraged to look at such applications. Another chapter looks

at how data scientists are important in analyzing medical data. This chapter reflects

a view from Asia and discusses the roadmap for data science use in medicine.

Smoking has been noted as one of the leading causes of human suffering. This part

includes a chapter on comorbidity aspects related to smokers based on a Big Data

analysis. The details presented in this chapter would help the reader to focus on other

possible applications of Big Data in medicine, especially cancer. Finally, a chapter is

Preface xi

included that shows how scientific analysis of Big Data helps with epileptic seizure

prediction and control.

Part IV of the book deals with applications in Business. This is an area where

Big Data use is expected to provide tangible results quickly to businesses. The

three applications listed under this part include an application in banking, an

application in marketing and an application in Quick Serve Restaurants. The

banking application is written by a group of researchers in Europe. Their analysis

shows that the importance of identifying financial fraud early is a global problem

and how Big Data is used in this effort. The marketing application highlights the

various ways in which Big Data could be used in business. Many large business

sectors such as the airlines industry are using Big Data to set prices. The application

with respect to a Quick Serve Restaurant chain deals with the impact of Yelp ratings

and how it influences people’s use of Quick Serve Restaurants.

As mentioned at the outset, this collection of chapters on Big Data applications

is expected to serve as a sample for other applications in various fields. The readers

will find novel ways in which data from multiple sources is combined to derive

benefit for the general user. Also, in specific areas such as medicine, the use of Big

Data is having profound impact in opening up new areas for exploration based on

the availability of large volumes of data. These are all having practical applications

that help extend people’s lives. I earnestly hope that this collection of applications

will spur the interest of the reader to look at novel ways of using Big Data.

This book is a collective effort of many people. The contributors to this book

come from North America, Europe and Asia. This diversity shows that Big Data is

a truly global way in which people use the data to enhance their decision-making

capabilities and to derive practical benefits. The book greatly benefited from the

careful review by many reviewers who provided detailed feedback in a timely

manner. I have carefully checked all chapters for consistency of information in

content and appearance. In spite of careful checking and taking advantage of the

tools provided by technology, it is highly likely that some errors might have crept in

to the chapter content. In such cases I take responsibility for such errors and request

your help in bringing them to my attention so that they can be corrected in future

editions.

Houston, TX, USA S. Srinivasan

December 15, 2016

Acknowledgements

A project of this nature would be possible only with the collective efforts of many

people. Initially I proposed the project to Springer, New York, over two years ago.

Springer expressed interest in the proposal and one of their editors, Ms. Mary

James, contacted me to discuss the details. After extensive discussions with major

researchers around the world we finally settled on this approach. A global Call for

Chapters was made in January 2016 both by me and Springer, New York, through

their channels of communication. Ms. Mary James helped throughout the project

by providing answers to questions that arose. In this context, I want to mention

the support of Ms. Brinda Megasyamalan from the printing house of Springer.

Ms. Megasyamalan has been a constant source of information as the project

progressed. Ms. Subhashree Rajan from the publishing arm of Springer has been

extremely cooperative and patient in getting all the page proofs and incorporating

all the corrections. Ms. Mary James provided all the encouragement and support

throughout the project by responding to inquiries in a timely manner.

The reviewers played a very important role in maintaining the quality of this

publication by their thorough reviews. We followed a double-blind review process

whereby the reviewers were unaware of the identity of the authors and vice versa.

This helped in providing quality feedback. All the authors cooperated very well by

incorporating the reviewers’ suggestions and submitting their final chapters within

the time allotted for that purpose. I want to thank individually all the reviewers and

all the authors for their dedication and contribution to this collective effort.

I want to express my sincere thanks to Dr. Gari Clifford of Emory University and

Georgia Institute of Technology in providing the Foreword to this publication. In

spite of his many commitments, Dr. Clifford was able to find the time to go over all

the Abstracts and write the Foreword without delaying the project.

Finally, I want to express my sincere appreciation to my wife for accommodating

the many special needs when working on a project of this nature.

xiii

Contents

Part I General

1 Strategic Applications of Big Data ........................................ 3

Joe Weinman

2 Start with Privacy by Design in All Big Data Applications ............ 29

Ann Cavoukian and Michelle Chibba

3 Privacy Preserving Federated Big Data Analysis ....................... 49

Wenrui Dai, Shuang Wang, Hongkai Xiong, and Xiaoqian Jiang

4 Word Embedding for Understanding Natural Language:

A Survey ..................................................................... 83

Yang Li and Tao Yang

Part II Applications in Science

5 Big Data Solutions to Interpreting Complex Systems in the

Environment ................................................................ 107

Hongmei Chi, Sharmini Pitter, Nan Li, and Haiyan Tian

6 High Performance Computing and Big Data ............................ 125

Rishi Divate, Sankalp Sah, and Manish Singh

7 Managing Uncertainty in Large-Scale Inversions for the Oil

and Gas Industry with Big Data .......................................... 149

Jiefu Chen, Yueqin Huang, Tommy L. Binford Jr., and Xuqing Wu

8 Big Data in Oil & Gas and Petrophysics ................................. 175

Mark Kerzner and Pierre Jean Daniel

9 Friendship Paradoxes on Quora .......................................... 205

Shankar Iyer

10 Deduplication Practices for Multimedia Data in the Cloud ........... 245

Fatema Rashid and Ali Miri

xv

xvi Contents

11 Privacy-Aware Search and Computation Over Encrypted Data

Stores......................................................................... 273

Hoi Ting Poon and Ali Miri

12 Civil Infrastructure Serviceability Evaluation Based on Big Data.... 295

Yu Liang, Dalei Wu, Dryver Huston, Guirong Liu, Yaohang Li,

Cuilan Gao, and Zhongguo John Ma

Part III Applications in Medicine

13 Nonlinear Dynamical Systems with Chaos and Big Data:

A Case Study of Epileptic Seizure Prediction and Control ............ 329

Ashfaque Shafique, Mohamed Sayeed, and Konstantinos Tsakalis

14 Big Data to Big Knowledge for Next Generation Medicine:

A Data Science Roadmap .................................................. 371

Tavpritesh Sethi

15 Time-Based Comorbidity in Patients Diagnosed with Tobacco

Use Disorder................................................................. 401

Pankush Kalgotra, Ramesh Sharda, Bhargav Molaka,

and Samsheel Kathuri

16 The Impact of Big Data on the Physician ................................ 415

Elizabeth Le, Sowmya Iyer, Teja Patil, Ron Li, Jonathan H. Chen,

Michael Wang, and Erica Sobel

Part IV Applications in Business

17 The Potential of Big Data in Banking .................................... 451

Rimvydas Skyrius, Gintar˙

eGiri

¯

unien˙

e, Igor Katin,

Michail Kazimianec, and Raimundas Žilinskas

18 Marketing Applications Using Big Data ................................. 487

S. Srinivasan

19 Does Yelp Matter? Analyzing (And Guide to Using) Ratings

for a Quick Serve Restaurant Chain ..................................... 503

Bogdan Gadidov and Jennifer Lewis Priestley

Author Biographies .............................................................. 523

Index............................................................................... 553

List of Reviewers

Maruthi Bhaskar

Jay Brandi

Jorge Brusa

Arnaub Chatterjee

Robert Evans

Aly Farag

Lila Ghemri

Ben Hu

Balaji Janamanchi

Mehmed Kantardzic

Mark Kerzner

Ashok Krishnamurthy

Angabin Matin

Hector Miranda

P. S. Raju

S. Srinivasan

Rakesh Verma

Daniel Vrinceanu

Haibo Wang

Xuqing Wu

Alec Yasinsac

xvii

Part I

General

Chapter 1

Strategic Applications of Big Data

Joe Weinman

1.1 Introduction

For many people, big data is somehow virtually synonymous with one application—

marketing analytics—in one vertical—retail. For example, by collecting purchase

transaction data from shoppers based on loyalty cards or other unique identifiers

such as telephone numbers, account numbers, or email addresses, a company can

segment those customers better and identify promotions that will boost profitable

revenues, either through insights derived from the data, A/B testing, bundling, or

the like. Such insights can be extended almost without bound. For example, through

sophisticated analytics, Harrah’s determined that its most profitable customers

weren’t “gold cuff-linked, limousine-riding high rollers,” but rather teachers, doc-

tors, and even machinists (Loveman 2003). Not only did they come to understand

who their best customers were, but how they behaved and responded to promotions.

For example, their target customers were more interested in an offer of $60 worth of

chips than a total bundle worth much more than that, including a room and multiple

steak dinners in addition to chips.

While marketing such as this is a great application of big data and analytics, the

reality is that big data has numerous strategic business applications across every

industry vertical. Moreover, there are many sources of big data available from a

company’s day-to-day business activities as well as through open data initiatives,

such as data.gov in the U.S., a source with almost 200,000 datasets at the time of

this writing.

To apply big data to critical areas of the firm, there are four major generic

approaches that companies can use to deliver unparalleled customer value and

J. Weinman ()

Independent Consultant, Flanders, NJ 07836, USA

e-mail: joeweinman@gmail.com

© Springer International Publishing AG 2018

S. Srinivasan (ed.), Guide to Big Data Applications, Studies in Big Data 26,

DOI 10.1007/978-3-319-53817-4_1

3

4 J. Weinman

achieve strategic competitive advantage: better processes, better products and

services, better customer relationships, and better innovation.

1.1.1 Better Processes

Big data can be used to optimize processes and asset utilization in real time, to

improve them in the long term, and to generate net new revenues by entering

new businesses or at least monetizing data generated by those processes. UPS

optimizes pickups and deliveries across its 55,000 routes by leveraging data ranging

from geospatial and navigation data to customer pickup constraints (Rosenbush

and Stevens 2015). Or consider 23andMe, which has sold genetic data it collects

from individuals. One such deal with Genentech focused on Parkinson’s disease

gained net new revenues of fifty million dollars, rivaling the revenues from its “core”

business (Lee 2015).

1.1.2 Better Products and Services

Big data can be used to enrich the quality of customer solutions, moving them up

the experience economy curve from mere products or services to experiences or

transformations. For example, Nike used to sell sneakers, a product. However, by

collecting and aggregating activity data from customers, it can help transform them

into better athletes. By linking data from Nike products and apps with data from

ecosystem solution elements, such as weight scales and body-fat analyzers, Nike

can increase customer loyalty and tie activities to outcomes (Withings 2014).

1.1.3 Better Customer Relationships

Rather than merely viewing data as a crowbar with which to open customers’ wallets

a bit wider through targeted promotions, it can be used to develop deeper insights

into each customer, thus providing better service and customer experience in the

short term and products and services better tailored to customers as individuals

in the long term. Netflix collects data on customer activities, behaviors, contexts,

demographics, and intents to better tailor movie recommendations (Amatriain

2013). Better recommendations enhance customer satisfaction and value which

in turn makes these customers more likely to stay with Netflix in the long term,

reducing churn and customer acquisition costs, as well as enhancing referral (word-

of-mouth) marketing. Harrah’s determined that customers that were “very happy”

with their customer experience increased their spend by 24% annually; those that

were unhappy decreased their spend by 10% annually (Loveman 2003).

1 Strategic Applications of Big Data 5

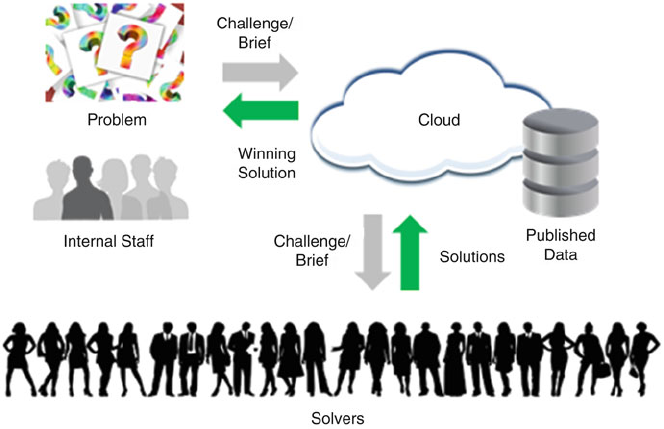

1.1.4 Better Innovation

Data can be used to accelerate the innovation process, and make it of higher quality,

all while lowering cost. Data sets can be published or otherwise incorporated as

part of an open contest or challenge, enabling ad hoc solvers to identify a best

solution meeting requirements. For example, GE Flight Quest incorporated data

on scheduled and actual flight departure and arrival times, for a contest intended

to devise algorithms to better predict arrival times, and another one intended to

improve them (Kaggle n.d.). As the nexus of innovation moves from man to

machine, data becomes the fuel on which machine innovation engines run.

These four business strategies are what I call digital disciplines (Weinman

2015), and represent an evolution of three customer-focused strategies called value

disciplines, originally devised by Michael Treacy and Fred Wiersema in their inter-

national bestseller The Discipline of Market Leaders (Treacy and Wiersema 1995).

1.2 From Value Disciplines to Digital Disciplines

The value disciplines originally identified by Treacy and Wiersema are operational

excellence,product leadership, and customer intimacy.

Operational excellence entails processes which generate customer value by being

lower cost or more convenient than those of competitors. For example, Michael

Dell, operating as a college student out of a dorm room, introduced an assemble-

to-order process for PCs by utilizing a direct channel which was originally the

phone or physical mail and then became the Internet and eCommerce. He was

able to drive the price down, make it easier to order, and provide a PC built to

customers’ specifications by creating a new assemble-to-order process that bypassed

indirect channel middlemen that stocked pre-built machines en masse, who offered

no customization but charged a markup nevertheless.

Product leadership involves creating leading-edge products (or services) that

deliver superior value to customers. We all know the companies that do this: Rolex

in watches, Four Seasons in lodging, Singapore Airlines or Emirates in air travel.

Treacy and Wiersema considered innovation as being virtually synonymous with

product leadership, under the theory that leading products must be differentiated in

some way, typically through some innovation in design, engineering, or technology.

Customer intimacy, according to Treacy and Wiersema, is focused on segmenting

markets, better understanding the unique needs of those niches, and tailoring solu-

tions to meet those needs. This applies to both consumer and business markets. For

example, a company that delivers packages might understand a major customer’s

needs intimately, and then tailor a solution involving stocking critical parts at

their distribution centers, reducing the time needed to get those products to their

customers. In the consumer world, customer intimacy is at work any time a tailor

adjusts a garment for a perfect fit, a bartender customizes a drink, or a doctor

diagnoses and treats a medical issue.

6 J. Weinman

Traditionally, the thinking was that a company would do well to excel in a given

discipline, and that the disciplines were to a large extent mutually exclusive. For

example, a fast food restaurant might serve a limited menu to enhance operational

excellence. A product leadership strategy of having many different menu items, or a

customer intimacy strategy of customizing each and every meal might conflict with

the operational excellence strategy. However, now, the economics of information—

storage prices are exponentially decreasing and data, once acquired, can be lever-

aged elsewhere—and the increasing flexibility of automation—such as robotics—

mean that companies can potentially pursue multiple strategies simultaneously.

Digital technologies such as big data enable new ways to think about the insights

originally derived by Treacy and Wiersema. Another way to think about it is that

digital technologies plus value disciplines equal digital disciplines: operational

excellence evolves to information excellence, product leadership of standalone

products and services becomes solution leadership of smart, digital products and

services connected to the cloud and ecosystems, customer intimacy expands to

collective intimacy, and traditional innovation becomes accelerated innovation.In

the digital disciplines framework, innovation becomes a separate discipline, because

innovation applies not only to products, but also processes, customer relationships,

and even the innovation process itself. Each of these new strategies can be enabled

by big data in profound ways.

1.2.1 Information Excellence

Operational excellence can be viewed as evolving to information excellence, where

digital information helps optimize physical operations including their processes and

resource utilization; where the world of digital information can seamlessly fuse

with that of physical operations; and where virtual worlds can replace physical.

Moreover, data can be extracted from processes to enable long term process

improvement, data collected by processes can be monetized, and new forms of

corporate structure based on loosely coupled partners can replace traditional,

monolithic, vertically integrated companies. As one example, location data from

cell phones can be aggregated and analyzed to determine commuter traffic patterns,

thereby helping to plan transportation network improvements.

1.2.2 Solution Leadership

Products and services can become sources of big data, or utilize big data to

function more effectively. Because individual products are typically limited in

storage capacity, and because there are benefits to data aggregation and cloud

processing, normally the data that is collected can be stored and processed in the

cloud. A good example might be the GE GEnx jet engine, which collects 5000

1 Strategic Applications of Big Data 7

data points each second from each of 20 sensors. GE then uses the data to develop

better predictive maintenance algorithms, thus reducing unplanned downtime for

airlines. (GE Aviation n.d.) Mere product leadership becomes solution leadership,

where standalone products become cloud-connected and data-intensive. Services

can also become solutions, because services are almost always delivered through

physical elements: food services through restaurants and ovens; airline services

through planes and baggage conveyors; healthcare services through x-ray machines

and pacemakers. The components of such services connect to each other and exter-

nally. For example, healthcare services can be better delivered through connected

pacemakers, and medical diagnostic data from multiple individual devices can be

aggregated to create a patient-centric view to improve health outcomes.

1.2.3 Collective Intimacy

Customer intimacy is no longer about dividing markets into segments, but rather

dividing markets into individuals, or even further into multiple personas that an

individual might have. Personalization and contextualization offers the ability to

not just deliver products and services tailored to a segment, but to an individual.

To do this effectively requires current, up-to-date information as well as historical

data, collected at the level of the individual and his or her individual activities

and characteristics down to the granularity of DNA sequences and mouse moves.

Collective intimacy is the notion that algorithms running on collective data from

millions of individuals can generate better tailored services for each individual.

This represents the evolution of intimacy from face-to-face, human-mediated

relationships to virtual, human-mediated relationships over social media, and from

there, onward to virtual, algorithmically mediated products and services.

1.2.4 Accelerated Innovation

Finally, innovation is not just associated with product leadership, but can create new

processes, as Walmart did with cross-docking or Uber with transportation, or new

customer relationships and collective intimacy, as Amazon.com uses data to better

upsell/cross-sell, and as Netflix innovated its Cinematch recommendation engine.

The latter was famously done through the Netflix Prize, a contest with a million

dollar award for whoever could best improve Cinematch by at least 10% (Bennett

and Lanning 2007). Such accelerated innovation can be faster, cheaper, and better

than traditional means of innovation. Often, such approaches exploit technologies

such as the cloud and big data. The cloud is the mechanism for reaching multiple

potential solvers on an ad hoc basis, with published big data being the fuel for

problem solving. For example, Netflix published anonymized customer ratings of

movies, and General Electric published planned and actual flight arrival times.

8 J. Weinman

Today, machine learning and deep learning based on big data sets are a means

by which algorithms are innovating themselves. Google DeepMind’s AlphaGo Go-

playing system beat the human world champion at Go, Lee Sedol, partly based on

learning how to play by not only “studying” tens of thousands of human games, but

also by playing an increasingly tougher competitor: itself (Moyer 2016).

1.2.5 Value Disciplines to Digital Disciplines

The three classic value disciplines of operational excellence, product leadership and

customer intimacy become transformed in a world of big data and complementary

digital technologies to become information excellence, solution leadership, collec-

tive intimacy, and accelerated innovation. These represent four generic strategies

that leverage big data in the service of strategic competitive differentiation; four

generic strategies that represent the horizontal applications of big data.

1.3 Information Excellence

Most of human history has been centered on the physical world. Hunting and

gathering, fishing, agriculture, mining, and eventually manufacturing and physical

operations such as shipping, rail, and eventually air transport. It’s not news that the

focus of human affairs is increasingly digital, but the many ways in which digital

information can complement, supplant, enable, optimize, or monetize physical

operations may be surprising. As more of the world becomes digital, the use of

information, which after all comes from data, becomes more important in the

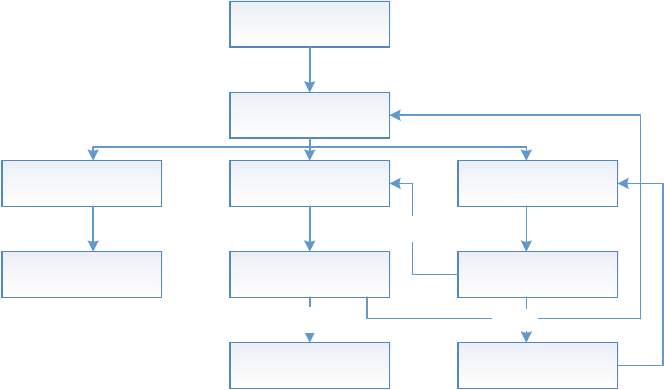

spheres of business, government, and society (Fig. 1.1).

1.3.1 Real-Time Process and Resource Optimization

There are numerous business functions, such as legal, human resources, finance,

engineering, and sales, and a variety of ways in which different companies in a

variety of verticals such as automotive, healthcare, logistics, or pharmaceuticals

configure these functions into end-to-end processes. Examples of processes might

be “claims processing” or “order to delivery” or “hire to fire”. These in turn use a

variety of resources such as people, trucks, factories, equipment, and information

technology.

Data can be used to optimize resource use as well as to optimize processes for

goals such as cycle time, cost, or quality.

Some good examples of the use of big data to optimize processes are inventory

management/sales forecasting, port operations, and package delivery logistics.

1 Strategic Applications of Big Data 9

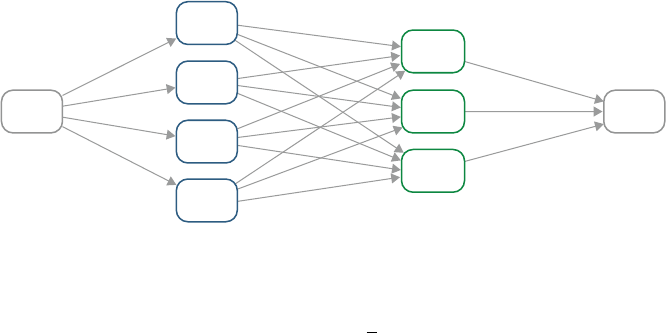

Fig. 1.1 High-level architecture for information excellence

Too much inventory is a bad thing, because there are costs to holding inventory:

the capital invested in the inventory, risk of disaster, such as a warehouse fire,

insurance, floor space, obsolescence, shrinkage (i.e., theft), and so forth. Too little

inventory is also bad, because not only may a sale be lost, but the prospect may

go elsewhere to acquire the good, realize that the competitor is a fine place to

shop, and never return. Big data can help with sales forecasting and thus setting

correct inventory levels. It can also help to develop insights, which may be subtle

or counterintuitive. For example, when Hurricane Frances was projected to strike

Florida, analytics helped stock stores, not only with “obvious” items such as

bottled water and flashlights, but non-obvious products such as strawberry Pop-Tarts

(Hayes 2004). This insight was based on mining store transaction data from prior

hurricanes.

Consider a modern container port. There are multiple goals, such as minimizing

the time ships are in port to maximize their productivity, minimizing the time ships

or rail cars are idle, ensuring the right containers get to the correct destinations,

maximizing safety, and so on. In addition, there may be many types of structured

and unstructured data, such as shipping manifests, video surveillance feeds of roads

leading to and within the port, data on bridges, loading cranes, weather forecast data,

truck license plates, and so on. All of these data sources can be used to optimize port

operations in line with the multiple goals (Xvela 2016).

Or consider a logistics firm such as UPS. UPS has invested hundreds of millions

of dollars in ORION (On-Road Integrated Optimization and Navigation). It takes

data such as physical mapping data regarding roads, delivery objectives for each

package, customer data such as when customers are willing to accept deliveries,

and the like. For each of 55,000 routes, ORION determines the optimal sequence of

10 J. Weinman

an average of 120 stops per route. The combinatorics here are staggering, since there

are roughly 10**200 different possible sequences, making it impossible to calculate

a perfectly optimal route, but heuristics can take all this data and try to determine

the best way to sequence stops and route delivery trucks to minimize idling time,

time waiting to make left turns, fuel consumption and thus carbon footprint, and

to maximize driver labor productivity and truck asset utilization, all the while

balancing out customer satisfaction and on-time deliveries. Moreover real-time data

such as geographic location, traffic congestion, weather, and fuel consumption, can

be exploited for further optimization (Rosenbush and Stevens 2015).

Such capabilities could also be used to not just minimize time or maximize

throughput, but also to maximize revenue. For example, a theme park could

determine the optimal location for a mobile ice cream or face painting stand,

based on prior customer purchases and exact location of customers within the

park. Customers’ locations and identities could be identified through dedicated long

range radios, as Disney does with MagicBands; through smartphones, as Singtel’s

DataSpark unit does (see below); or through their use of related geographically

oriented services or apps, such as Uber or Foursquare.

1.3.2 Long-Term Process Improvement

In addition to such real-time or short-term process optimization, big data can also

be used to optimize processes and resources over the long term.

For example, DataSpark, a unit of Singtel (a Singaporean telephone company)

has been extracting data from cell phone locations to be able to improve the

MTR (Singapore’s subway system) and customer experience (Dataspark 2016).

For example, suppose that GPS data showed that many subway passengers were

traveling between two stops but that they had to travel through a third stop—a hub—

to get there. By building a direct line to bypass the intermediate stop, travelers could

get to their destination sooner, and congestion could be relieved at the intermediate

stop as well as on some of the trains leading to it. Moreover, this data could also be

used for real-time process optimization, by directing customers to avoid a congested

area or line suffering an outage through the use of an alternate route. Obviously a

variety of structured and unstructured data could be used to accomplish both short-

term and long-term improvements, such as GPS data, passenger mobile accounts

and ticket purchases, video feeds of train stations, train location data, and the like.

1.3.3 Digital-Physical Substitution and Fusion

The digital world and the physical world can be brought together in a number of

ways. One way is substitution, as when a virtual audio, video, and/or web conference

substitutes for physical airline travel, or when an online publication substitutes for

1 Strategic Applications of Big Data 11

a physically printed copy. Another way to bring together the digital and physical

worlds is fusion, where both online and offline experiences become seamlessly

merged. An example is in omni-channel marketing, where a customer might browse

online, order online for pickup in store, and then return an item via the mail. Or, a

customer might browse in the store, only to find the correct size out of stock, and

order in store for home delivery. Managing data across the customer journey can

provide a single view of the customer to maximize sales and share of wallet for that

customer. This might include analytics around customer online browsing behavior,

such as what they searched for, which styles and colors caught their eye, or what

they put into their shopping cart. Within the store, patterns of behavior can also be

identified, such as whether people of a certain demographic or gender tend to turn

left or right upon entering the store.

1.3.4 Exhaust-Data Monetization

Processes which are instrumented and monitored can generate massive amounts

of data. This data can often be monetized or otherwise create benefits in creative

ways. For example, Uber’s main business is often referred to as “ride sharing,”

which is really just offering short term ground transportation to passengers desirous

of rides by matching them up with drivers who can give them rides. However, in

an arrangement with the city of Boston, it will provide ride pickup and drop-off

locations, dates, and times. The city will use the data for traffic engineering, zoning,

and even determining the right number of parking spots needed (O’Brien 2015).

Such inferences can be surprisingly subtle. Consider the case of a revolving door

firm that could predict retail trends and perhaps even recessions. Fewer shoppers

visiting retail stores means fewer shoppers entering via the revolving door. This

means lower usage of the door, and thus fewer maintenance calls.

Another good example is 23andMe. 23andMe is a firm that was set up to leverage

new low cost gene sequence technologies. A 23andMe customer would take a saliva

sample and mail it to 23andMe, which would then sequence the DNA and inform

the customer about certain genetically based risks they might face, such as markers

signaling increased likelihood of breast cancer due to a variant in the BRCA1 gene.

They also would provide additional types of information based on this sequence,

such as clarifying genetic relationships among siblings or questions of paternity.

After compiling massive amounts of data, they were able to monetize the

collected data outside of their core business. In one $50 million deal, they sold data

from Parkinson’s patients to Genentech, with the objective of developing a cure

for Parkinson’s through deep analytics (Lee 2015). Note that not only is the deal

lucrative, especially since essentially no additional costs were incurred to sell this

data, but also highly ethical. Parkinson’s patients would like nothing better than for

Genentech—or anybody else, for that matter—to develop a cure.

12 J. Weinman

1.3.5 Dynamic, Networked, Virtual Corporations

Processes don’t need to be restricted to the four walls of the corporation. For

example, supply chain optimization requires data from suppliers, channels, and

logistics companies. Many companies have focused on their core business and

outsourced or partnered with others to create and continuously improve supply

chains. For example, Apple sells products, but focuses on design and marketing,

not manufacturing. As many people know, Apple products are built by a partner,

Foxconn, with expertise in precision manufacturing electronic products.

One step beyond such partnerships or virtual corporations are dynamic, net-

worked virtual corporations. An example is Li & Fung. Apple sells products such

as iPhones and iPads, without owning any manufacturing facilities. Similarly, Li &

Fung sells products, namely clothing, without owning any manufacturing facilities.

However, unlike Apple, who relies largely on one main manufacturing partner; Li &

Fung relies on a network of over 10,000 suppliers. Moreover, the exact configuration

of those suppliers can change week by week or even day by day, even for the same

garment. A shirt, for example, might be sewed in Indonesia with buttons from

Thailand and fabric from S. Korea. That same SKU, a few days later, might be

made in China with buttons from Japan and fabric from Vietnam. The constellation

of suppliers is continuously optimized, by utilizing data on supplier resource

availability and pricing, transportation costs, and so forth (Wind et al. 2009).

1.3.6 Beyond Business

Information excellence also applies to governmental and societal objectives. Earlier

we mentioned using big data to improve the Singapore subway operations and

customer experience; later we’ll mention how it’s being used to improve traffic

congestion in Rio de Janeiro. As an example of societal objectives, consider the

successful delivery of vaccines to remote areas. Vaccines can lose their efficacy

or even become unsafe unless they are refrigerated, but delivery to outlying areas

can mean a variety of transport mechanisms and intermediaries. For this reason,

it is important to ensure that they remain refrigerated across their “cold chain.”

A low-tech method could potentially warn of unsafe vaccines: for example, put a

container of milk in with the vaccines, and if the milk spoils it will smell bad and the

vaccines are probably bad as well. However, by collecting data wirelessly from the

refrigerators throughout the delivery process, not only can it be determined whether

the vaccines are good or bad, but improvements can be made to the delivery process

by identifying the root cause of the loss of refrigeration, for example, loss of power

at a particular port, and thus steps can be taken to mitigate the problem, such as the

deployment of backup power generators (Weinman 2016).

1 Strategic Applications of Big Data 13

1.4 Solution Leadership

Products (and services) were traditionally standalone and manual, but now have

become connected and automated. Products and services now connect to the cloud

and from there on to ecosystems. The ecosystems can help collect data, analyze it,

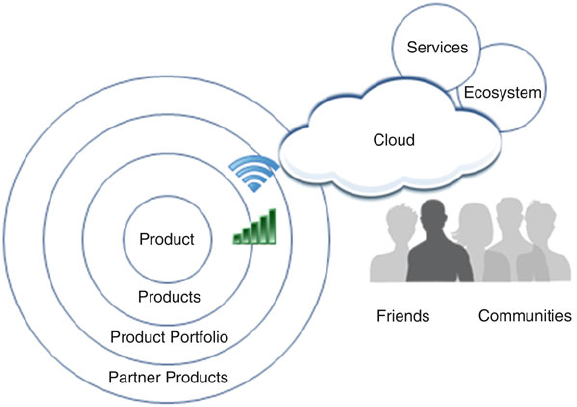

provide data to the products or services, or all of the above (Fig. 1.2).

1.4.1 Digital-Physical Mirroring

In product engineering, an emerging approach is to build a data-driven engineering

model of a complex product. For example, GE mirrors its jet engines with “digital

twins” or “virtual machines” (unrelated to the computing concept of the same

name). The idea is that features, engineering design changes, and the like can be

made to the model much more easily and cheaply than building an actual working jet

engine. A new turbofan blade material with different weight, brittleness, and cross

section might be simulated to determine impacts on overall engine performance.

To do this requires product and materials data. Moreover, predictive analytics can

be run against massive amounts of data collected from operating engines (Warwick

2015).

Fig. 1.2 High-level architecture for solution leadership

14 J. Weinman

1.4.2 Real-Time Product/Service Optimization

Recall that solutions are smart, digital, connected products that tie over networks to

the cloud and from there onward to unbounded ecosystems. As a result, the actual

tangible, physical product component functionality can potentially evolve over time

as the virtual, digital components adapt. As two examples, consider a browser that

provides “autocomplete” functions in its search bar, i.e., typing shortcuts based

on previous searches, thus saving time and effort. Or, consider a Tesla, whose

performance is improved by evaluating massive quantities of data from all the Teslas

on the road and their performance. As Tesla CEO Elon Musk says, “When one car

learns something, the whole fleet learns” (Coren 2016).

1.4.3 Product/Service Usage Optimization

Products or services can be used better by customers by collecting data and pro-

viding feedback to customers. The Ford Fusion’s EcoGuide SmartGauge provides

feedback to drivers on their fuel efficiency. Jackrabbit starts are bad; smooth driving

is good. The EcoGuide SmartGauge grows “green” leaves to provide drivers with

feedback, and is one of the innovations credited with dramatically boosting sales of

the car (Roy 2009).

GE Aviation’s Flight Efficiency Services uses data collected from numerous

flights to determine best practices to maximize fuel efficiency, ultimately improving

airlines carbon footprint and profitability. This is an enormous opportunity, because

it’s been estimated that one-fifth of fuel is wasted due to factors such as suboptimal

fuel usage and inefficient routing. For example, voluminous data and quantitative

analytics were used to develop a business case to gain approval from the Malaysian

Directorate of Civil Aviation for AirAsia to use single-engine taxiing. This con-

serves fuel because only one engine is used to taxi, rather than all the engines

running while the plane is essentially stuck on the runway.

Perhaps one of the most interesting examples of using big data to optimize

products and services comes from a company called Opower, which was acquired

by Oracle. It acquires data on buildings, such as year built, square footage, and

usage, e.g., residence, hair salon, real estate office. It also collects data from smart

meters on actual electricity consumption. By combining all of this together, it

can message customers such as businesses and homeowners with specific, targeted

insights, such as that a particular hair salon’s electricity consumption is higher than

80% of hair salons of similar size in the area built to the same building code (and

thus equivalently insulated). Such “social proof” gamification has been shown to

be extremely effective in changing behavior compared to other techniques such as

rational quantitative financial comparisons (Weinman 2015).

1 Strategic Applications of Big Data 15

1.4.4 Predictive Analytics and Predictive Maintenance

Collecting data from things and analyzing it can enable predictive analytics and

predictive maintenance. For example, the GE GEnx jet engine has 20 or so sensors,

each of which collects 5000 data points per second in areas such as oil pressure,

fuel flow and rotation speed. This data can then be used to build models and identify

anomalies and predict when the engine will fail.

This in turn means that airline maintenance crews can “fix” an engine before it

fails. This maximizes what the airlines call “time on wing,” in other words, engine

availability. Moreover, engines can be proactively repaired at optimal times and

optimal locations, where maintenance equipment, crews, and spare parts are kept

(Weinman 2015).

1.4.5 Product-Service System Solutions

When formerly standalone products become connected to back-end services and

solve customer problems they become product-service system solutions. Data can

be the glue that holds the solution together. A good example is Nike and the

NikeCecosystem.

Nike has a number of mechanisms for collecting activity tracking data, such as

the NikeCFuelBand, mobile apps, and partner products, such as NikeCKinect

which is a video “game” that coaches you through various workouts. These can

collect data on activities, such as running or bicycling or doing jumping jacks. Data

can be collected, such as the route taken on a run, and normalized into “NikeFuel”

points (Weinman 2015).

Other elements of the ecosystem can measure outcomes. For example, a variety

of scales can measure weight, but the Withings Smart Body Analyzer can also

measure body fat percentage, and link that data to NikeFuel points (Choquel 2014).

By linking devices measuring outcomes to devices monitoring activities—with

the linkages being data traversing networks—individuals can better achieve their

personal goals to become better athletes, lose a little weight, or get more toned.

1.4.6 Long-Term Product Improvement

Actual data on how products are used can ultimately be used for long-term product

improvement. For example, a cable company can collect data on the pattern of

button presses on its remote controls. A repeated pattern of clicking around the

“Guide” button fruitlessly and then finally ordering an “On Demand” movie might

lead to a clearer placement of a dedicated “On Demand” button on the control. Car

companies such as Tesla can collect data on actual usage, say, to determine how

16 J. Weinman

many batteries to put in each vehicle based on the statistics of distances driven;

airlines can determine what types of meals to offer; and so on.

1.4.7 The Experience Economy

In the Experience Economy framework, developed by Joe Pine and Jim Gilmore,

there is a five-level hierarchy of increasing customer value and firm profitability.

At the lowest level are commodities, which may be farmed, fished, or mined, e.g.,

coffee beans. At the next level of value are products, e.g., packaged, roasted coffee

beans. Still one level higher are services, such as a corner coffee bar. One level

above this are experiences, such as a fine French restaurant, which offers coffee

on the menu as part of a “total product” that encompasses ambience, romance, and

professional chefs and services. But, while experiences may be ephemeral, at the

ultimate level of the hierarchy lie transformations, which are permanent, such as

a university education, learning a foreign language, or having life-saving surgery

(Pine and Gilmore 1999).

1.4.8 Experiences

Experiences can be had without data or technology. For example, consider a hike

up a mountain to its summit followed by taking in the scenery and the fresh air.

However, data can also contribute to experiences. For example, Disney MagicBands

are long-range radios that tie to the cloud. Data on theme park guests can be used to

create magical, personalized experiences. For example, guests can sit at a restaurant

without expressly checking in, and their custom order will be brought to their

table, based on tracking through the MagicBands and data maintained in the cloud

regarding the individuals and their orders (Kuang 2015).

1.4.9 Transformations

Data can also be used to enable transformations. For example, the NikeCfamily

and ecosystem of solutions mentioned earlier can help individuals lose weight or

become better athletes. This can be done by capturing data from the individual on

steps taken, routes run, and other exercise activities undertaken, as well as results

data through connected scales and body fat monitors. As technology gets more

sophisticated, no doubt such automated solutions will do what any athletic coach

does, e.g., coaching on backswings, grip positions, stride lengths, pronation and the

like. This is how data can help enable transformations (Weinman 2015).

1 Strategic Applications of Big Data 17

1.4.10 Customer-Centered Product and Service Data

Integration

When multiple products and services each collect data, they can provide a 360ıview

of the patient. For example, patients are often scanned by radiological equipment

such as CT (computed tomography) scanners and X-ray machines. While individual

machines should be calibrated to deliver a safe dose, too many scans from too

many devices over too short a period can deliver doses over accepted limits, leading

potentially to dangers such as cancer. GE Dosewatch provides a single view of the

patient, integrating dose information from multiple medical devices from a variety

of manufacturers, not just GE (Combs 2014).

Similarly, financial companies are trying to develop a 360ıview of their

customers’ financial health. Rather than the brokerage division being run separately

from the mortgage division, which is separate from the retail bank, integrating data

from all these divisions can help ensure that the customer is neither over-leveraged

or underinvested.

1.4.11 Beyond Business

The use of connected refrigerators to help improve the cold chain was described

earlier in the context of information excellence for process improvement. Another

example of connected products and services is cities, such as Singapore, that help

reduce carbon footprint through connected parking garages. The parking lots report

how many spaces they have available, so that a driver looking for parking need not

drive all around the city: clearly visible digital signs and a mobile app describe how

many—if any—spaces are available (Abdullah 2015).

This same general strategy can be used with even greater impact in the devel-

oping world. For example, in some areas, children walk an hour or more to a well

to fill a bucket with water for their families. However, the well may have gone dry.

Connected, “smart” pump handles can report their usage, and inferences can be

made as to the state of the well. For example, a few pumps of the handle and then

no usage, another few pumps and then no usage, etc., is likely to signify someone

visiting the well, attempting to get water, then abandoning the effort due to lack of

success (ITU and Cisco 2016).

1.5 Collective Intimacy

At one extreme, a customer “relationship” is a one-time, anonymous transaction.

Consider a couple celebrating their 30th wedding anniversary with a once-in-a-

lifetime trip to Paris. While exploring the left bank, they buy a baguette and some

Brie from a hole-in-the-wall bistro. They will never see the bistro again, nor vice

versa.

18 J. Weinman

At the other extreme, there are companies and organizations that see customers

repeatedly. Amazon.com sees its customers’ patterns of purchases; Netflix sees its

customers’ patterns of viewing; Uber sees its customers’ patterns of pickups and

drop-offs. As other verticals become increasingly digital, they too will gain more

insight into customers as individuals, rather than anonymous masses. For example,

automobile insurers are increasingly pursuing “pay-as-you-drive,” or “usage-based”

insurance. Rather than customers’ premiums being merely based on aggregate,

coarse-grained information such as age, gender, and prior tickets, insurers can

charge premiums based on individual, real-time data such as driving over the speed

limit, weaving in between lanes, how congested the road is, and so forth.

Somewhere in between, there are firms that may not have any transaction history

with a given customer, but can use predictive analytics based on statistical insights

derived from large numbers of existing customers. Capital One, for example,

famously disrupted the existing market for credit cards by building models to create

“intimate” offers tailored to each prospect rather than a one-size fits all model

(Pham 2015).

Big data can also be used to analyze and model churn. Actions can be taken to

intercede before a customer has defected, thus retaining that customer and his or her

profits.

In short, big data can be used to determine target prospects, determine what to

offer them, maximize revenue and profitability, keep them, decide to let them defect

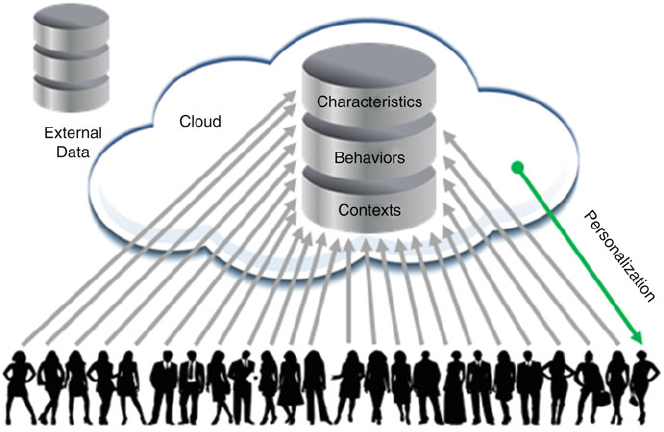

to a competitor, or win them back (Fig. 1.3).

Fig. 1.3 High-level architecture for collective intimacy

1 Strategic Applications of Big Data 19

1.5.1 Target Segments, Features and Bundles

A traditional arena for big data and analytics has been better marketing to customers

and market basket analysis. For example, one type of analysis entails identifying

prospects, clustering customers, non-buyers, and prospects as three groups: loyal

customers, who will buy your product no matter what; those who won’t buy no

matter what; and those that can be swayed. Marketing funds for advertising and

promotions are best spent with the last category, which will generate sales uplift.

A related type of analysis is market basket analysis, identifying those products

that might be bought together. Offering bundles of such products can increase profits

(Skiera and Olderog 2000). Even without bundles, better merchandising can goose

sales. For example, if new parents who buy diapers also buy beer, it makes sense to

put them in the aisle together. This may be extended to product features, where the

“bundle” isn’t a market basket but a basket of features built in to a product, say a

sport suspension package and a V8 engine in a sporty sedan.

1.5.2 Upsell/Cross-Sell

Amazon.com uses a variety of approaches to maximize revenue per customer. Some,

such as Amazon Prime, which offers free two-day shipping, are low tech and based

on behavioral economics principles such as the “flat-rate bias” and how humans

frame expenses such as sunk costs (Lambrecht and Skiera 2006). But they are

perhaps best known for their sophisticated algorithms, which do everything from

automating pricing decisions, making millions of price changes every day, (Falk

2013) and also in recommending additional products to buy, through a variety of

algorithmically generated capabilities such as “People Also Bought These Items”.

Some are reasonably obvious, such as, say, paper and ink suggestions if a copier

is bought or a mounting plate if a large flat screen TV is purchased. But many are

subtle, and based on deep analytics at scale of the billions of purchases that have

been made.

1.5.3 Recommendations

If Amazon.com is the poster child for upsell/cross-sell, Netflix is the one for a

pure recommendation engine. Because Netflix charges a flat rate for a household,

there is limited opportunity for upsell without changing the pricing model. Instead,

the primary opportunity is for customer retention, and perhaps secondarily, referral

marketing, i.e., recommendations from existing customers to their friends. The key

to that is maximizing the quality of the total customer experience. This has multiple

dimensions, such as whether DVDs arrive in a reasonable time or a streaming video

20 J. Weinman

plays cleanly at high resolution, as opposed to pausing to rebuffer frequently. But

one very important dimension is the quality of the entertainment recommendations,

because 70% of what Netflix viewers watch comes about through recommendations.

If viewers like the recommendations, they will like Netflix, and if they don’t, they

will cancel service. So, reduced churn and maximal lifetime customer value are

highly dependent on this (Amatriain 2013).

Netflix uses extremely sophisticated algorithms against trillions of data points,

which attempt to solve as best as possible the recommendation problem. For

example, they must balance out popularity with personalization. Most people like

popular movies; this is why they are popular. But every viewer is an individual,

hence will like different things. Netflix continuously evolves their recommendation

engine(s), which determine which options are presented when a user searches, what

is recommended based on what’s trending now, what is recommended based on

prior movies the user has watched, and so forth. This evolution spans a broad

set of mathematical and statistical methods and machine learning algorithms, such

as matrix factorization, restricted Boltzmann machines, latent Dirichlet allocation,

gradient boosted decision trees, and affinity propagation (Amatriain 2013). In addi-

tion, a variety of metrics—such as member retention and engagement time—and

experimentation techniques—such as offline experimentation and A/B testing—are

tuned for statistical validity and used to measure the success of the ensemble of

algorithms (Gomez-Uribe and Hunt 2015).

1.5.4 Sentiment Analysis

A particularly active current area in big data is the use of sophisticated algorithms to

determine an individual’s sentiment (Yegulalp 2015). For example, textual analysis

of tweets or posts can determine how a customer feels about a particular product.

Emerging techniques include emotional analysis of spoken utterances and even

sentiment analysis based on facial imaging.

Some enterprising companies are using sophisticated algorithms to conduct such

sentiment analysis at scale, in near real time, to buy or sell stocks based on how

sentiment is turning as well as additional analytics (Lin 2016).

1.5.5 Beyond Business

Such an approach is relevant beyond the realm of corporate affairs. For example,

a government could utilize a collective intimacy strategy in interacting with its