Wiley.The.Data.Warehouse.Toolkit.The..Guide.to.Dimensional.ing.Second.Edition

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 447 [warning: Documents this large are best viewed by clicking the View PDF Link!]

John Wiley & Sons, Inc.

NEW YORK • CHICHESTER • WEINHEIM • BRISBANE • SINGAPORE • TORONTO

Wiley Computer Publishing

Ralph Kimball

Margy Ross

The Data Warehouse

Toolkit

Second Edition

The Complete Guide to

Dimensional Modeling

TEAMFLY

Team-Fly®

The Data Warehouse Toolkit

Second Edition

John Wiley & Sons, Inc.

NEW YORK • CHICHESTER • WEINHEIM • BRISBANE • SINGAPORE • TORONTO

Wiley Computer Publishing

Ralph Kimball

Margy Ross

The Data Warehouse

Toolkit

Second Edition

The Complete Guide to

Dimensional Modeling

Publisher: Robert Ipsen

Editor: Robert Elliott

Assistant Editor: Emilie Herman

Managing Editor: John Atkins

Associate New Media Editor: Brian Snapp

Text Composition: John Wiley Composition Services

Designations used by companies to distinguish their products are often claimed as trade-

marks. In all instances where John Wiley & Sons, Inc., is aware of a claim, the product names

appear in initial capital or ALL CAPITAL LETTERS. Readers, however, should contact the

appropriate companies for more complete information regarding trademarks and registration.

This book is printed on acid-free paper. ∞

Copyright © 2002 by Ralph Kimball and Margy Ross. All rights reserved.

Published by John Wiley and Sons, Inc.

Published simultaneously in Canada.

No part of this publication may be reproduced, stored in a retrieval system or transmitted

in any form or by any means, electronic, mechanical, photocopying, recording, scanning

or otherwise, except as permitted under Sections 107 or 108 of the 1976 United States

Copyright Act, without either the prior written permission of the Publisher, or authoriza-

tion through payment of the appropriate per-copy fee to the Copyright Clearance Center,

222 Rosewood Drive, Danvers, MA 01923, (978) 750-8400, fax (978) 750-4744. Requests

to the Publisher for permission should be addressed to the Permissions Department,

John Wiley & Sons, Inc., 605 Third Avenue, New York, NY 10158-0012, (212) 850-6011, fax

(212) 850-6008, E-Mail: PERMREQ@WILEY.COM.

This publication is designed to provide accurate and authoritative information in regard to

the subject matter covered. It is sold with the understanding that the publisher is not

engaged in professional services. If professional advice or other expert assistance is

required, the services of a competent professional person should be sought.

Library of Congress Cataloging-in-Publication Data:

Kimball, Ralph.

The data warehouse toolkit : the complete guide to dimensional modeling /

Ralph Kimball, Margy Ross. — 2nd ed.

p. cm.

“Wiley Computer Publishing.”

Includes index.

ISBN 0-471-20024-7

1. Database design. 2. Data warehousing. I. Ross, Margy, 1959– II. Title.

QA76.9.D26 K575 2002

658.4'038'0285574—dc21 2002002284

Printed in the United States of America.

10 9 8 7 6 5 4 3 2 1

CONTENTS

v

Acknowledgments xv

Introduction xvii

Chapter 1 Dimensional Modeling Primer 1

Different Information Worlds 2

Goals of a Data Warehouse 2

The Publishing Metaphor 4

Components of a Data Warehouse 6

Operational Source Systems 7

Data Staging Area 8

Data Presentation 10

Data Access Tools 13

Additional Considerations 14

Dimensional Modeling Vocabulary 16

Fact Table 16

Dimension Tables 19

Bringing Together Facts and Dimensions 21

Dimensional Modeling Myths 24

Common Pitfalls to Avoid 26

Summary 27

Chapter 2 Retail Sales 29

Four-Step Dimensional Design Process 30

Retail Case Study 32

Step 1. Select the Business Process 33

Step 2. Declare the Grain 34

Step 3. Choose the Dimensions 35

Step 4. Identify the Facts 36

Dimension Table Attributes 38

Date Dimension 38

Product Dimension 42

Store Dimension 45

Promotion Dimension 46

Degenerate Transaction Number Dimension 50

Retail Schema in Action 51

Retail Schema Extensibility 52

Resisting Comfort Zone Urges 54

Dimension Normalization (Snowflaking) 55

Too Many Dimensions 57

Surrogate Keys 58

Market Basket Analysis 62

Summary 65

Chapter 3 Inventory 67

Introduction to the Value Chain 68

Inventory Models 69

Inventory Periodic Snapshot 69

Inventory Transactions 74

Inventory Accumulating Snapshot 75

Value Chain Integration 76

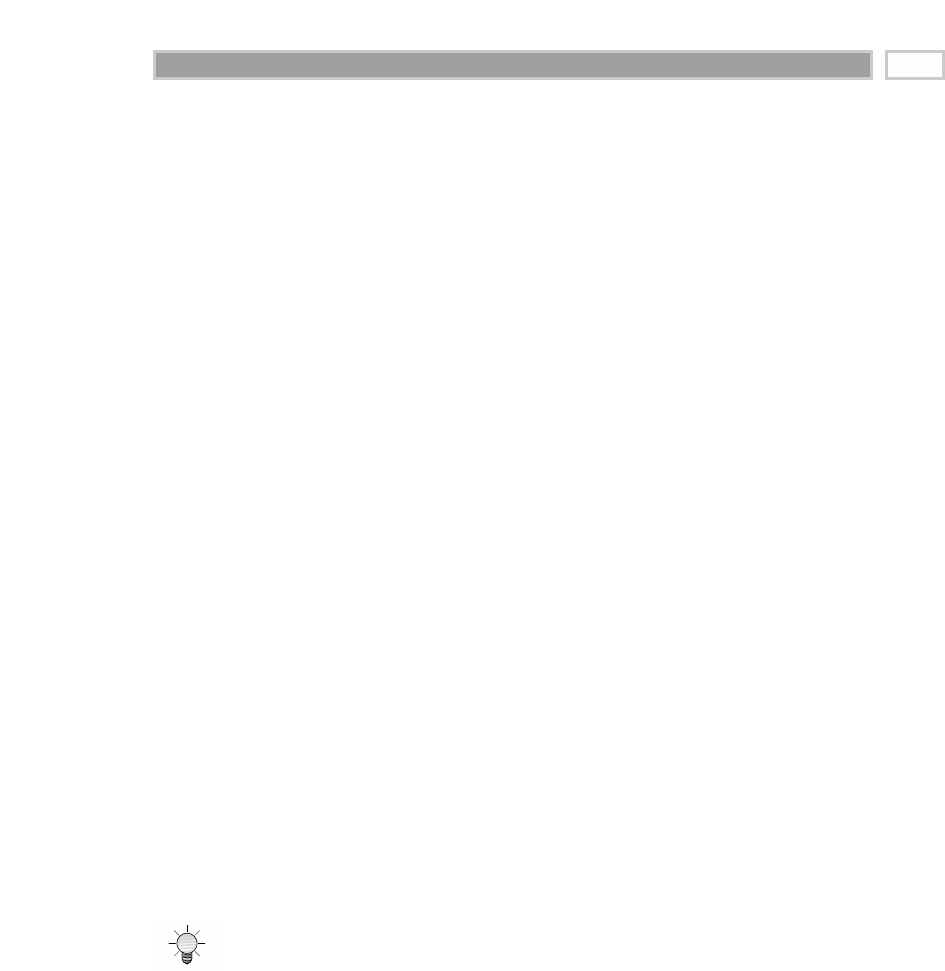

Data Warehouse Bus Architecture 78

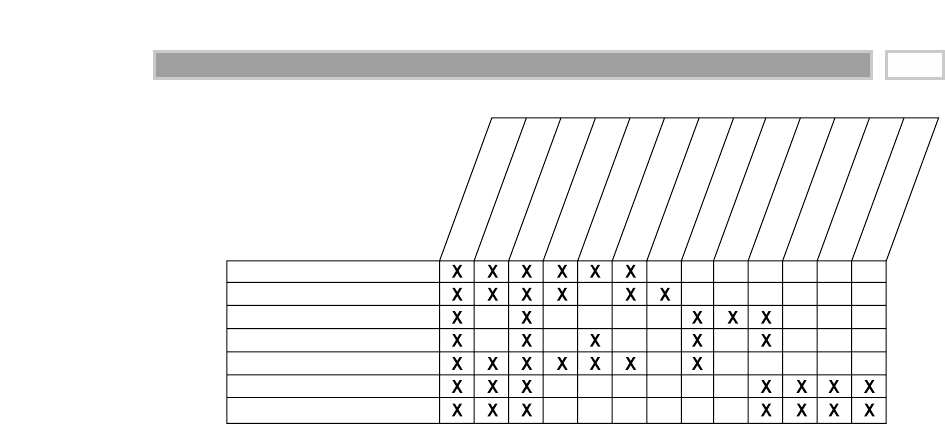

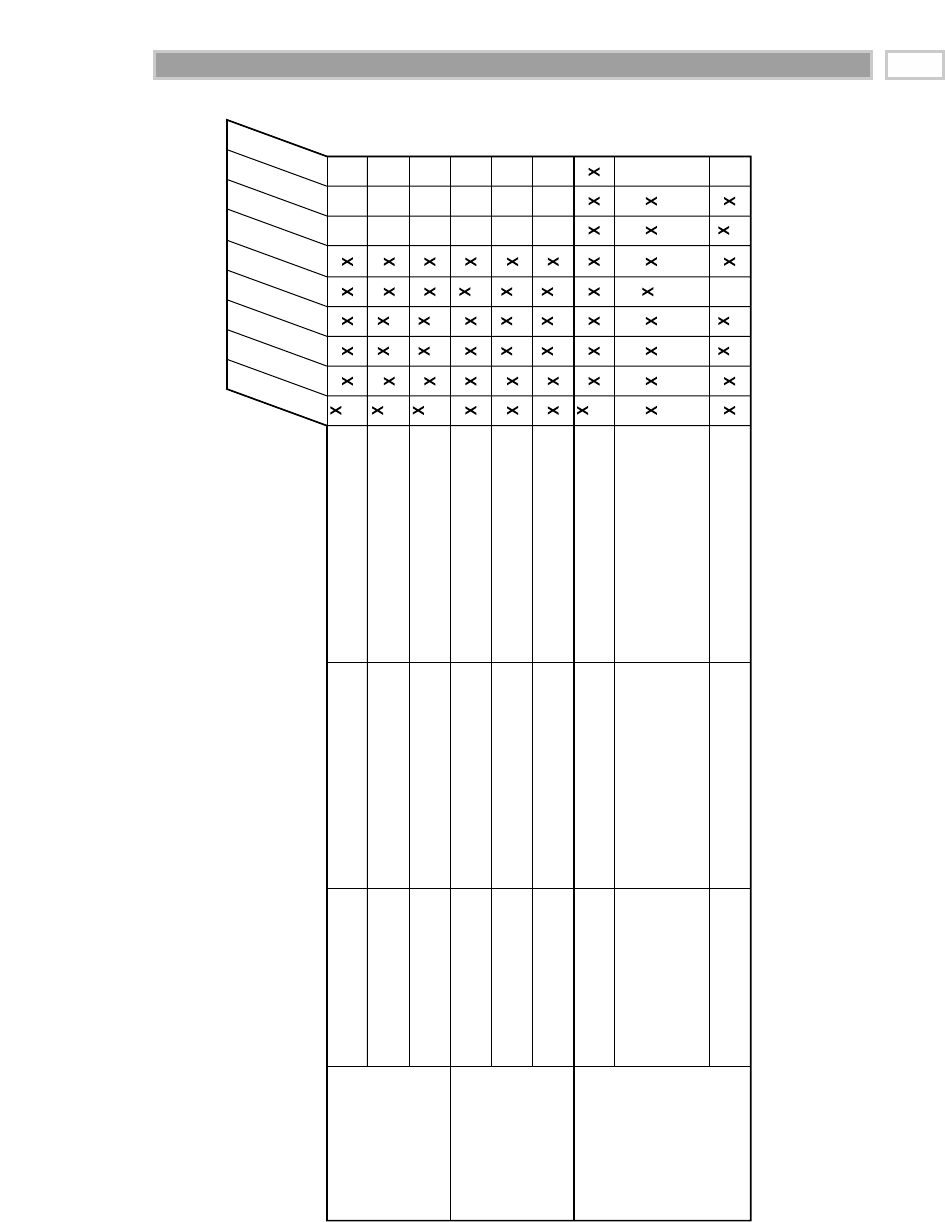

Data Warehouse Bus Matrix 79

Conformed Dimensions 82

Conformed Facts 87

Summary 88

Chapter 4 Procurement 89

Procurement Case Study 89

Procurement Transactions 90

Multiple- versus Single-Transaction Fact Tables 91

Complementary Procurement Snapshot 93

Contents

vi

Slowly Changing Dimensions 95

Type 1: Overwrite the Value 95

Type 2: Add a Dimension Row 97

Type 3: Add a Dimension Column 100

Hybrid Slowly Changing Dimension Techniques 102

Predictable Changes with Multiple Version Overlays 102

Unpredictable Changes with Single Version Overlay 103

More Rapidly Changing Dimensions 105

Summary 105

Chapter 5 Order Management 107

Introduction to Order Management 108

Order Transactions 109

Fact Normalization 109

Dimension Role-Playing 110

Product Dimension Revisited 111

Customer Ship-To Dimension 113

Deal Dimension 116

Degenerate Dimension for Order Number 117

Junk Dimensions 117

Multiple Currencies 119

Header and Line Item Facts with Different Granularity 121

Invoice Transactions 122

Profit and Loss Facts 124

Profitability—The Most Powerful Data Mart 126

Profitability Words of Warning 127

Customer Satisfaction Facts 127

Accumulating Snapshot for the Order Fulfillment Pipeline 128

Lag Calculations 130

Multiple Units of Measure 130

Beyond the Rear-View Mirror 132

Fact Table Comparison 132

Transaction Fact Tables 133

Periodic Snapshot Fact Tables 134

Accumulating Snapshot Fact Tables 134

Contents vii

Designing Real-Time Partitions 135

Requirements for the Real-Time Partition 136

Transaction Grain Real-Time Partition 136

Periodic Snapshot Real-Time Partition 137

Accumulating Snapshot Real-Time Partition 138

Summary 139

Chapter 6 Customer Relationship Management 141

CRM Overview 142

Operational and Analytical CRM 143

Packaged CRM 145

Customer Dimension 146

Name and Address Parsing 147

Other Common Customer Attributes 150

Dimension Outriggers for a Low-Cardinality Attribute Set 153

Large Changing Customer Dimensions 154

Implications of Type 2 Customer Dimension Changes 159

Customer Behavior Study Groups 160

Commercial Customer Hierarchies 161

Combining Multiple Sources of Customer Data 168

Analyzing Customer Data from Multiple Business Processes 169

Summary 170

Chapter 7 Accounting 173

Accounting Case Study 174

General Ledger Data 175

General Ledger Periodic Snapshot 175

General Ledger Journal Transactions 177

Financial Statements 180

Budgeting Process 180

Consolidated Fact Tables 184

Role of OLAP and Packaged Analytic Solutions 185

Summary 186

Contents

viii

Chapter 8 Human Resources Management 187

Time-Stamped Transaction Tracking in a Dimension 188

Time-Stamped Dimension with Periodic Snapshot Facts 191

Audit Dimension 193

Keyword Outrigger Dimension 194

AND/OR Dilemma 195

Searching for Substrings 196

Survey Questionnaire Data 197

Summary 198

Chapter 9 Financial Services 199

Banking Case Study 200

Dimension Triage 200

Household Dimension 204

Multivalued Dimensions 205

Minidimensions Revisited 206

Arbitrary Value Banding of Facts 207

Point-in-Time Balances 208

Heterogeneous Product Schemas 210

Heterogeneous Products with Transaction Facts 215

Summary 215

Chapter 10 Telecommunications and Utilities 217

Telecommunications Case Study 218

General Design Review Considerations 220

Granularity 220

Date Dimension 222

Degenerate Dimensions 222

Dimension Decodes and Descriptions 222

Surrogate Keys 223

Too Many (or Too Few) Dimensions 223

Draft Design Exercise Discussion 223

Geographic Location Dimension 226

Location Outrigger 226

Leveraging Geographic Information Systems 227

Summary 227

Contents ix

Chapter 11 Transportation 229

Airline Frequent Flyer Case Study 230

Multiple Fact Table Granularities 230

Linking Segments into Trips 233

Extensions to Other Industries 234

Cargo Shipper 234

Travel Services 235

Combining Small Dimensions into a Superdimension 236

Class of Service 236

Origin and Destination 237

More Date and Time Considerations 239

Country-Specific Calendars 239

Time of Day as a Dimension or Fact 240

Date and Time in Multiple Time Zones 240

Summary 241

Chapter 12 Education 243

University Case Study 244

Accumulating Snapshot for Admissions Tracking 244

Factless Fact Tables 246

Student Registration Events 247

Facilities Utilization Coverage 249

Student Attendance Events 250

Other Areas of Analytic Interest 253

Summary 254

Chapter 13 Health Care 255

Health Care Value Circle 256

Health Care Bill 258

Roles Played By the Date Dimension 261

Multivalued Diagnosis Dimension 262

Extending a Billing Fact Table to Show Profitability 265

Dimensions for Billed Hospital Stays 266

Contents

x

TEAMFLY

Team-Fly®

Complex Health Care Events 267

Medical Records 269

Fact Dimension for Sparse Facts 269

Going Back in Time 271

Late-Arriving Fact Rows 271

Late-Arriving Dimension Rows 273

Summary 274

Chapter 14 Electronic Commerce 277

Web Client-Server Interactions Tutorial 278

Why the Clickstream Is Not Just Another Data Source 281

Challenges of Tracking with Clickstream Data 282

Specific Dimensions for the Clickstream 287

Clickstream Fact Table for Complete Sessions 292

Clickstream Fact Table for Individual Page Events 295

Aggregate Clickstream Fact Tables 298

Integrating the Clickstream Data Mart into the

Enterprise Data Warehouse 299

Electronic Commerce Profitability Data Mart 300

Summary 303

Chapter 15 Insurance 305

Insurance Case Study 306

Insurance Value Chain 307

Draft Insurance Bus Matrix 309

Policy Transactions 309

Dimension Details and Techniques 310

Alternative (or Complementary) Policy

Accumulating Snapshot 315

Policy Periodic Snapshot 316

Conformed Dimensions 316

Conformed Facts 316

Heterogeneous Products Again 318

Multivalued Dimensions Again 318

Contents xi

More Insurance Case Study Background 319

Updated Insurance Bus Matrix 320

Claims Transactions 322

Claims Accumulating Snapshot 323

Policy/Claims Consolidated Snapshot 324

Factless Accident Events 325

Common Dimensional Modeling Mistakes to Avoid 326

Summary 330

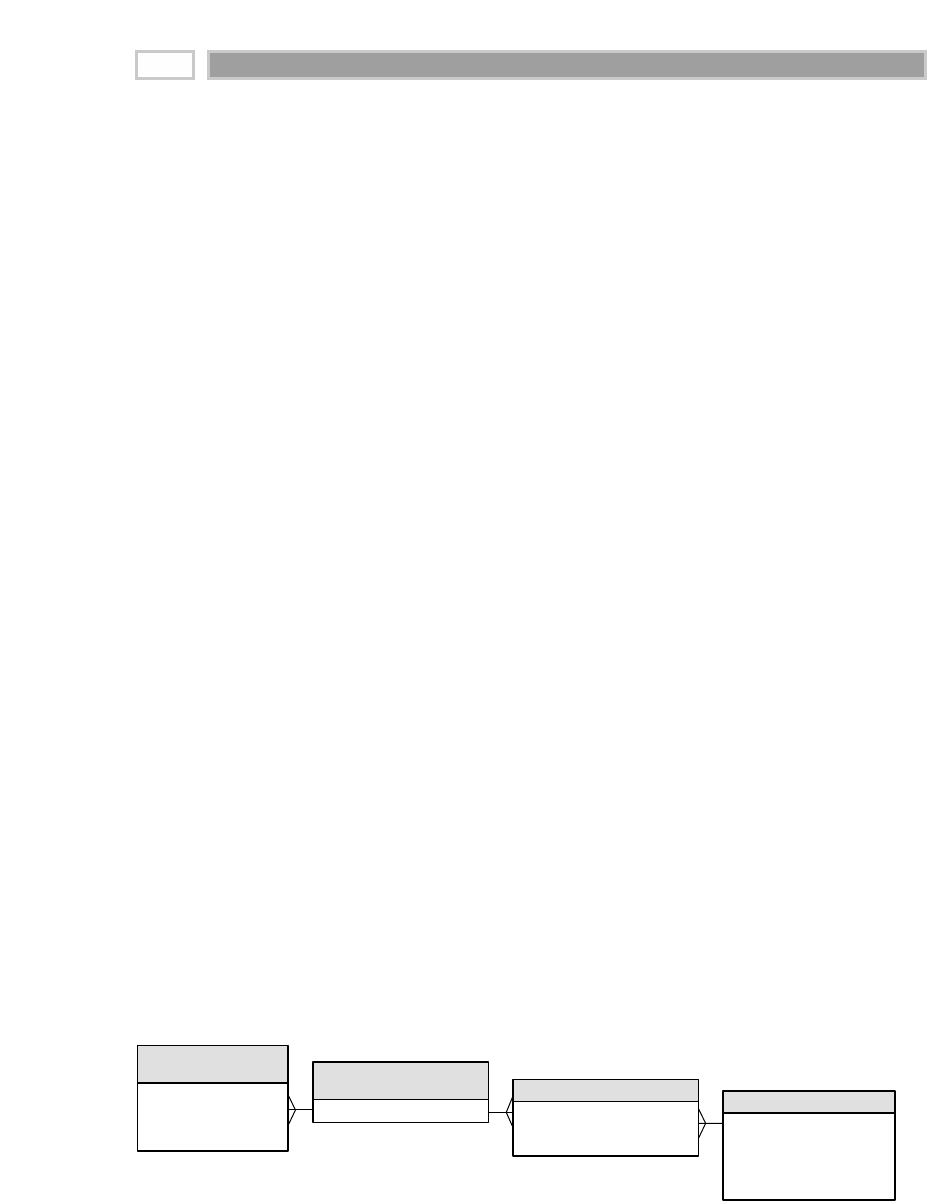

Chapter 16 Building the Data Warehouse 331

Business Dimensional Lifecycle Road Map 332

Road Map Major Points of Interest 333

Project Planning and Management 334

Assessing Readiness 334

Scoping 336

Justification 336

Staffing 337

Developing and Maintaining the Project Plan 339

Business Requirements Definition 340

Requirements Preplanning 341

Collecting the Business Requirements 343

Postcollection Documentation and Follow-up 345

Lifecycle Technology Track 347

Technical Architecture Design 348

Eight-Step Process for Creating the Technical Architecture 348

Product Selection and Installation 351

Lifecycle Data Track 353

Dimensional Modeling 353

Physical Design 355

Aggregation Strategy 356

Initial Indexing Strategy 357

Data Staging Design and Development 358

Dimension Table Staging 358

Fact Table Staging 361

Contents

xii

Lifecycle Analytic Applications Track 362

Analytic Application Specification 363

Analytic Application Development 363

Deployment 364

Maintenance and Growth 365

Common Data Warehousing Mistakes to Avoid 366

Summary 369

Chapter 17 Present Imperatives and Future Outlook 371

Ongoing Technology Advances 372

Political Forces Demanding Security and Affecting Privacy 375

Conflict between Beneficial Uses and Insidious Abuses 375

Who Owns Your Personal Data? 376

What Is Likely to Happen? Watching the Watchers . . . 377

How Watching the Watchers Affects Data

Warehouse Architecture 378

Designing to Avoid Catastrophic Failure 379

Catastrophic Failures 380

Countering Catastrophic Failures 380

Intellectual Property and Fair Use 383

Cultural Trends in Data Warehousing 383

Managing by the Numbers

across the Enterprise 383

Increased Reliance on Sophisticated Key

Performance Indicators 384

Behavior Is the New Marquee Application 385

Packaged Applications Have Hit Their High Point 385

Application Integration Has to Be Done by Someone 386

Data Warehouse Outsourcing Needs a Sober Risk Assessment 386

In Closing 387

Glossary 389

Index 419

Contents xiii

ACKNOWLEDGMENTS

xv

First of all, we want to thank the thousands of you who have read our Toolkit

books, attended our courses, and engaged us in consulting projects. We have

learned as much from you as we have taught. As a group, you have had a pro-

foundly positive impact on the data warehousing industry. Congratulations!

This book would not have been written without the assistance of our business

partners. We want to thank Julie Kimball of Ralph Kimball Associates for her

vision and determination in getting the project launched. While Julie was the

catalyst who got the ball rolling, Bob Becker of DecisionWorks Consulting

helped keep it in motion as he drafted, reviewed, and served as a general

sounding board. We are grateful to them both because they helped an enor-

mous amount.

We wrote this book with a little help from our friends, who provided input or

feedback on specific chapters. We want to thank Bill Schmarzo of Decision-

Works, Charles Hagensen of Attachmate Corporation, and Warren Thorn-

thwaite of InfoDynamics for their counsel on Chapters 6, 7, and 16, respectively.

Bob Elliott, our editor at John Wiley & Sons, and the entire Wiley team have

supported this project with skill, encouragement, and enthusiasm. It has been

a pleasure to work with them. We also want to thank Justin Kestelyn, editor-

in-chief at Intelligent Enterprise for allowing us to adapt materials from sev-

eral of Ralph’s articles for inclusion in this book.

To our families, thanks for being there for us when we needed you and for giv-

ing us the time it took. Spouses Julie Kimball and Scott Ross and children Sara

Hayden Smith, Brian Kimball, and Katie Ross all contributed a lot to this book,

often without realizing it. Thanks for your unconditional support.

xvii

INTRODUCTION

The data warehousing industry certainly has matured since Ralph Kimball pub-

lished the first edition of The Data Warehouse Toolkit (Wiley) in 1996. Although

large corporate early adopters paved the way, since then, data warehousing

has been embraced by organizations of all sizes. The industry has constructed

thousands of data warehouses. The volume of data continues to grow as we

populate our warehouses with increasingly atomic data and update them with

greater frequency. Vendors continue to blanket the market with an ever-

expanding set of tools to help us with data warehouse design, development,

and usage. Most important, armed with access to our data warehouses, busi-

ness professionals are making better decisions and generating payback on

their data warehouse investments.

Since the first edition of The Data Warehouse Toolkit was published, dimen-

sional modeling has been broadly accepted as the dominant technique for data

warehouse presentation. Data warehouse practitioners and pundits alike have

recognized that the data warehouse presentation must be grounded in sim-

plicity if it stands any chance of success. Simplicity is the fundamental key that

allows users to understand databases easily and software to navigate data-

bases efficiently. In many ways, dimensional modeling amounts to holding the

fort against assaults on simplicity. By consistently returning to a business-

driven perspective and by refusing to compromise on the goals of user under-

standability and query performance, we establish a coherent design that

serves the organization’s analytic needs. Based on our experience and the

overwhelming feedback from numerous practitioners from companies like

your own, we believe that dimensional modeling is absolutely critical to a suc-

cessful data warehousing initiative.

Dimensional modeling also has emerged as the only coherent architecture for

building distributed data warehouse systems. When we use the conformed

dimensions and conformed facts of a set of dimensional models, we have a

practical and predictable framework for incrementally building complex data

warehouse systems that have no center.

For all that has changed in our industry, the core dimensional modeling tech-

niques that Ralph Kimball published six years ago have withstood the test of

time. Concepts such as slowly changing dimensions, heterogeneous products,

factless fact tables, and architected data marts continue to be discussed in data

warehouse design workshops around the globe. The original concepts have

been embellished and enhanced by new and complementary techniques. We

decided to publish a second edition of Kimball’s seminal work because we felt

that it would be useful to pull together our collective thoughts on dimensional

modeling under a single cover. We have each focused exclusively on decision

support and data warehousing for over two decades. We hope to share the

dimensional modeling patterns that have emerged repeatedly during the

course of our data warehousing careers. This book is loaded with specific,

practical design recommendations based on real-world scenarios.

The goal of this book is to provide a one-stop shop for dimensional modeling

techniques. True to its title, it is a toolkit of dimensional design principles and

techniques. We will address the needs of those just getting started in dimen-

sional data warehousing, and we will describe advanced concepts for those of

you who have been at this a while. We believe that this book stands alone in its

depth of coverage on the topic of dimensional modeling.

Intended Audience

This book is intended for data warehouse designers, implementers, and man-

agers. In addition, business analysts who are active participants in a ware-

house initiative will find the content useful.

Even if you’re not directly responsible for the dimensional model, we believe

that it is important for all members of a warehouse project team to be comfort-

able with dimensional modeling concepts. The dimensional model has an

impact on most aspects of a warehouse implementation, beginning with the

translation of business requirements, through data staging, and finally, to the

unveiling of a data warehouse through analytic applications. Due to the broad

implications, you need to be conversant in dimensional modeling regardless

whether you are responsible primarily for project management, business

analysis, data architecture, database design, data staging, analytic applica-

tions, or education and support. We’ve written this book so that it is accessible

to a broad audience.

For those of you who have read the first edition of this book, some of the famil-

iar case studies will reappear in this edition; however, they have been updated

significantly and fleshed out with richer content. We have developed vignettes

for new industries, including health care, telecommunications, and electronic

commerce. In addition, we have introduced more horizontal, cross-industry

case studies for business functions such as human resources, accounting, pro-

curement, and customer relationship management.

Introduction

xviii

Introduction xix

The content in this book is mildly technical. We discuss dimensional modeling

in the context of a relational database primarily. We presume that readers have

basic knowledge of relational database concepts such as tables, rows, keys,

and joins. Given that we will be discussing dimensional models in a non-

denominational manner, we won’t dive into specific physical design and

tuning guidance for any given database management systems.

Chapter Preview

The book is organized around a series of business vignettes or case studies. We

believe that developing the design techniques by example is an extremely

effective approach because it allows us to share very tangible guidance. While

not intended to be full-scale application or industry solutions, these examples

serve as a framework to discuss the patterns that emerge in dimensional mod-

eling. In our experience, it is often easier to grasp the main elements of a

design technique by stepping away from the all-too-familiar complexities of

one’s own applications in order to think about another business. Readers of

the first edition have responded very favorably to this approach.

The chapters of this book build on one another. We will start with basic con-

cepts and introduce more advanced content as the book unfolds. The chapters

are to be read in order by every reader. For example, Chapter 15 on insurance

will be difficult to comprehend unless you have read the preceding chapters

on retailing, procurement, order management, and customer relationship

management.

Those of you who have read the first edition may be tempted to skip the first

few chapters. While some of the early grounding regarding facts and dimen-

sions may be familiar turf, we don’t want you to sprint too far ahead. For

example, the first case study focuses on the retailing industry, just as it did in

the first edition. However, in this edition we advocate a new approach, mak-

ing a strong case for tackling the atomic, bedrock data of your organization.

You’ll miss out on this rationalization and other updates to fundamental con-

cepts if you skip ahead too quickly.

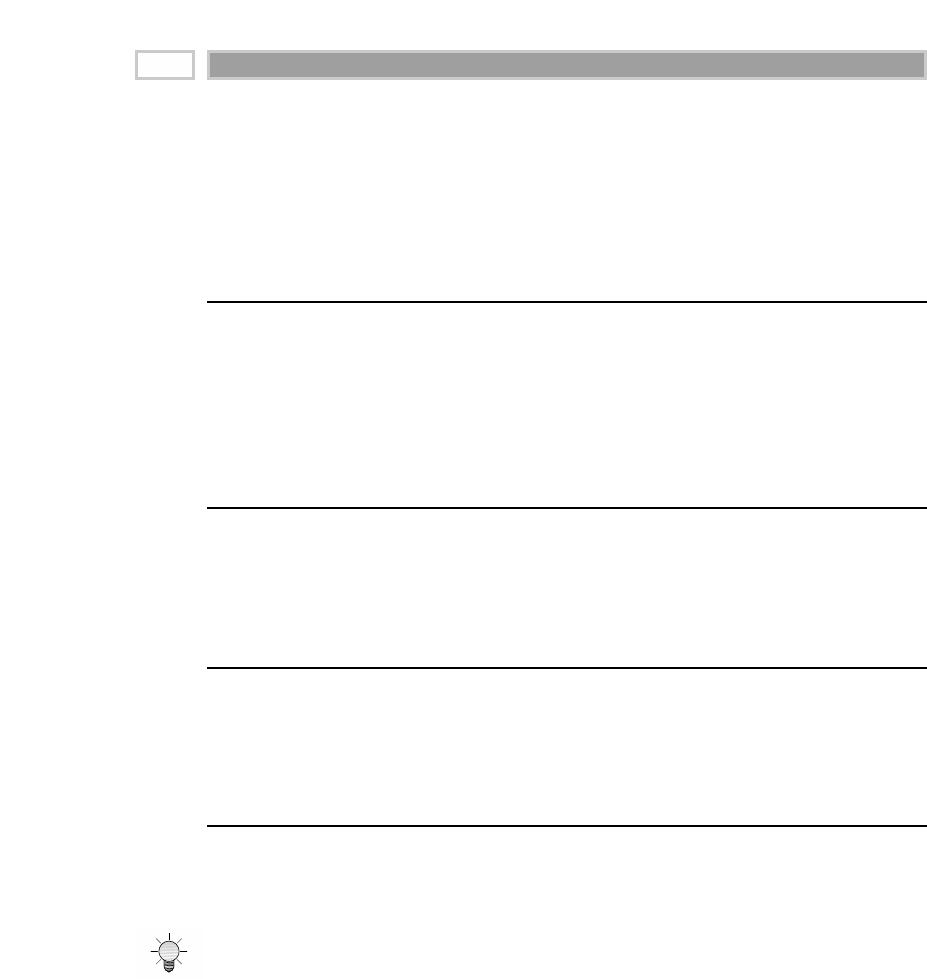

Navigation Aids

We have laced the book with tips, key concepts, and chapter pointers to make

it more usable and easily referenced in the future. In addition, we have pro-

vided an extensive glossary of terms.

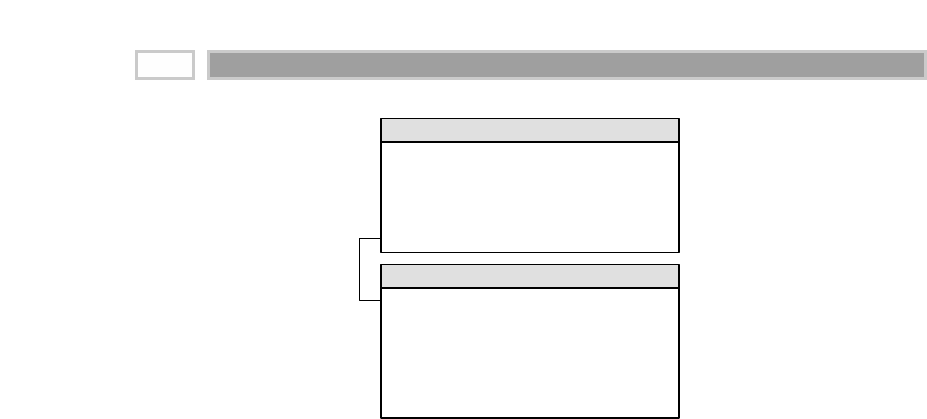

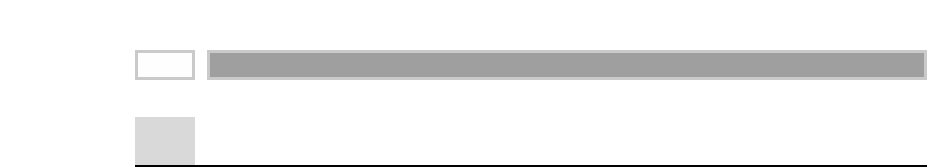

You can find the tips sprinkled throughout this book by flipping through the chapters

and looking for the lightbulb icon.

We begin each chapter with a sidebar of key concepts, denoted by the key icon.

Purpose of Each Chapter

Before we get started, we want to give you a chapter-by-chapter preview of the

concepts covered as the book unfolds.

Chapter 1: Dimensional Modeling Primer

The book begins with a primer on dimensional modeling. We explore the com-

ponents of the overall data warehouse architecture and establish core vocabu-

lary that will be used during the remainder of the book. We dispel some of the

myths and misconceptions about dimensional modeling, and we discuss the

role of normalized models.

Chapter 2: Retail Sales

Retailing is the classic example used to illustrate dimensional modeling. We

start with the classic because it is one that we all understand. Hopefully, you

won’t need to think very hard about the industry because we want you to

focus on core dimensional modeling concepts instead. We begin by discussing

the four-step process for designing dimensional models. We explore dimen-

sion tables in depth, including the date dimension that will be reused repeat-

edly throughout the book. We also discuss degenerate dimensions,

snowflaking, and surrogate keys. Even if you’re not a retailer, this chapter is

required reading because it is chock full of fundamentals.

Chapter 3: Inventory

We remain within the retail industry for our second case study but turn our

attention to another business process. This case study will provide a very vivid

example of the data warehouse bus architecture and the use of conformed

dimensions and facts. These concepts are critical to anyone looking to con-

struct a data warehouse architecture that is integrated and extensible.

Introduction

xx

TEAMFLY

Team-Fly®

Chapter 4: Procurement

This chapter reinforces the importance of looking at your organization’s value

chain as you plot your data warehouse. We also explore a series of basic and

advanced techniques for handling slowly changing dimension attributes.

Chapter 5: Order Management

In this case study we take a look at the business processes that are often the

first to be implemented in data warehouses as they supply core business per-

formance metrics—what are we selling to which customers at what price? We

discuss the situation in which a dimension plays multiple roles within a

schema. We also explore some of the common challenges modelers face when

dealing with order management information, such as header/line item con-

siderations, multiple currencies or units of measure, and junk dimensions with

miscellaneous transaction indicators. We compare the three fundamental

types of fact tables: transaction, periodic snapshot, and accumulating snap-

shot. Finally, we provide recommendations for handling more real-time ware-

housing requirements.

Chapter 6: Customer Relationship Management

Numerous data warehouses have been built on the premise that we need to bet-

ter understand and service our customers. This chapter covers key considera-

tions surrounding the customer dimension, including address standardization,

managing large volume dimensions, and modeling unpredictable customer

hierarchies. It also discusses the consolidation of customer data from multiple

sources.

Chapter 7: Accounting

In this totally new chapter we discuss the modeling of general ledger informa-

tion for the data warehouse. We describe the appropriate handling of year-to-

date facts and multiple fiscal calendars, as well as the notion of consolidated

dimensional models that combine data from multiple business processes.

Chapter 8: Human Resources Management

This new chapter explores several unique aspects of human resources dimen-

sional models, including the situation in which a dimension table begins to

behave like a fact table. We also introduce audit and keyword dimensions, as

well as the handling of survey questionnaire data.

Introduction xxi

Introduction

xxii

Chapter 9: Financial Services

The banking case study explores the concept of heterogeneous products in

which each line of business has unique descriptive attributes and performance

metrics. Obviously, the need to handle heterogeneous products is not unique

to financial services. We also discuss the complicated relationships among

accounts, customers, and households.

Chapter 10: Telecommunications and Utilities

This new chapter is structured somewhat differently to highlight considera-

tions when performing a data model design review. In addition, we explore

the idiosyncrasies of geographic location dimensions, as well as opportunities

for leveraging geographic information systems.

Chapter 11: Transportation

In this case study we take a look at related fact tables at different levels of gran-

ularity. We discuss another approach for handling small dimensions, and we

take a closer look at date and time dimensions, covering such concepts as

country-specific calendars and synchronization across multiple time zones.

Chapter 12: Education

We look at several factless fact tables in this chapter and discuss their impor-

tance in analyzing what didn’t happen. In addition, we explore the student

application pipeline, which is a prime example of an accumulating snapshot

fact table.

Chapter 13: Health Care

Some of the most complex models that we have ever worked with are from the

health care industry. This new chapter illustrates the handling of such com-

plexities, including the use of a bridge table to model multiple diagnoses and

providers associated with a patient treatment.

Chapter 14: Electronic Commerce

This chapter provides an introduction to modeling clickstream data. The con-

cepts are derived from The Data Webhouse Toolkit (Wiley 2000), which Ralph

Kimball coauthored with Richard Merz.

Introduction xxiii

Chapter 15: Insurance

The final case study serves to illustrate many of the techniques we discussed

earlier in the book in a single set of interrelated schemas. It can be viewed

as a pulling-it-all-together chapter because the modeling techniques will be

layered on top of one another, similar to overlaying overhead projector

transparencies.

Chapter 16: Building the Data Warehouse

Now that you are comfortable designing dimensional models, we provide a

high-level overview of the activities that are encountered during the lifecycle

of a typical data warehouse project iteration. This chapter could be considered

a lightning tour of The Data Warehouse Lifecycle Toolkit (Wiley 1998) that we

coauthored with Laura Reeves and Warren Thornthwaite.

Chapter 17: Present Imperatives and Future Outlook

In this final chapter we peer into our crystal ball to provide a preview of what

we anticipate data warehousing will look like in the future.

Glossary

We’ve supplied a detailed glossary to serve as a reference resource. It will help

bridge the gap between your general business understanding and the case

studies derived from businesses other than your own.

Companion Web Site

You can access the book’s companion Web site at www.kimballuniversity.com.

The Web site offers the following resources:

■■ Register for Design Tips to receive ongoing, practical guidance about

dimensional modeling and data warehouse design via electronic mail on a

periodic basis.

■■ Link to all Ralph Kimball’s articles from Intelligent Enterprise and its

predecessor, DBMS Magazine.

■■ Learn about Kimball University classes for quality, vendor-independent

education consistent with the authors’ experiences and writings.

Summary

The goal of this book is to communicate a set of standard techniques for

dimensional data warehouse design. Crudely speaking, if you as the reader

get nothing else from this book other than the conviction that your data ware-

house must be driven from the needs of business users and therefore built and

presented from a simple dimensional perspective, then this book will have

served its purpose. We are confident that you will be one giant step closer to

data warehousing success if you buy into these premises.

Now that you know where we are headed, it is time to dive into the details.

We’ll begin with a primer on dimensional modeling in Chapter 1 to ensure that

everyone is on the same page regarding key terminology and architectural

concepts. From there we will begin our discussion of the fundamental tech-

niques of dimensional modeling, starting with the tried-and-true retail industry.

Introduction

xxiv

Dimensional Modeling

Primer

CHAPTER 1

1

In this first chapter we lay the groundwork for the case studies that follow.

We’ll begin by stepping back to consider data warehousing from a macro per-

spective. Some readers may be disappointed to learn that it is not all about

tools and techniques—first and foremost, the data warehouse must consider

the needs of the business. We’ll drive stakes in the ground regarding the goals

of the data warehouse while observing the uncanny similarities between the

responsibilities of a data warehouse manager and those of a publisher. With

this big-picture perspective, we’ll explore the major components of the ware-

house environment, including the role of normalized models. Finally, we’ll

close by establishing fundamental vocabulary for dimensional modeling. By

the end of this chapter we hope that you’ll have an appreciation for the need

to be half DBA (database administrator) and half MBA (business analyst) as

you tackle your data warehouse.

Chapter 1 discusses the following concepts:

■■ Business-driven goals of a data warehouse

■■ Data warehouse publishing

■■ Major components of the overall data warehouse

■■ Importance of dimensional modeling for the data

warehouse presentation area

■■ Fact and dimension table terminology

■■ Myths surrounding dimensional modeling

■■ Common data warehousing pitfalls to avoid

Different Information Worlds

One of the most important assets of any organization is its information. This

asset is almost always kept by an organization in two forms: the operational

systems of record and the data warehouse. Crudely speaking, the operational

systems are where the data is put in, and the data warehouse is where we get

the data out.

The users of an operational system turn the wheels of the organization. They

take orders, sign up new customers, and log complaints. Users of an opera-

tional system almost always deal with one record at a time. They repeatedly

perform the same operational tasks over and over.

The users of a data warehouse, on the other hand, watch the wheels of the orga-

nization turn. They count the new orders and compare them with last week’s

orders and ask why the new customers signed up and what the customers

complained about. Users of a data warehouse almost never deal with one row

at a time. Rather, their questions often require that hundreds or thousands of

rows be searched and compressed into an answer set. To further complicate

matters, users of a data warehouse continuously change the kinds of questions

they ask.

In the first edition of The Data Warehouse Toolkit (Wiley 1996), Ralph Kimball

devoted an entire chapter to describe the dichotomy between the worlds of

operational processing and data warehousing. At this time, it is widely recog-

nized that the data warehouse has profoundly different needs, clients, struc-

tures, and rhythms than the operational systems of record. Unfortunately, we

continue to encounter supposed data warehouses that are mere copies of the

operational system of record stored on a separate hardware platform. While

this may address the need to isolate the operational and warehouse environ-

ments for performance reasons, it does nothing to address the other inherent

differences between these two types of systems. Business users are under-

whelmed by the usability and performance provided by these pseudo data

warehouses. These imposters do a disservice to data warehousing because

they don’t acknowledge that warehouse users have drastically different needs

than operational system users.

Goals of a Data Warehouse

Before we delve into the details of modeling and implementation, it is helpful

to focus on the fundamental goals of the data warehouse. The goals can be

developed by walking through the halls of any organization and listening to

business management. Inevitably, these recurring themes emerge:

CHAPTER 1

2

■■ “We have mountains of data in this company, but we can’t access it.”

■■ “We need to slice and dice the data every which way.”

■■ “You’ve got to make it easy for business people to get at the data directly.”

■■ “Just show me what is important.”

■■ “It drives me crazy to have two people present the same business metrics

at a meeting, but with different numbers.”

■■ “We want people to use information to support more fact-based decision

making.”

Based on our experience, these concerns are so universal that they drive the

bedrock requirements for the data warehouse. Let’s turn these business man-

agement quotations into data warehouse requirements.

The data warehouse must make an organization’s information easily acces-

sible. The contents of the data warehouse must be understandable. The

data must be intuitive and obvious to the business user, not merely the

developer. Understandability implies legibility; the contents of the data

warehouse need to be labeled meaningfully. Business users want to sepa-

rate and combine the data in the warehouse in endless combinations, a

process commonly referred to as slicing and dicing. The tools that access the

data warehouse must be simple and easy to use. They also must return

query results to the user with minimal wait times.

The data warehouse must present the organization’s information consis-

tently. The data in the warehouse must be credible. Data must be carefully

assembled from a variety of sources around the organization, cleansed,

quality assured, and released only when it is fit for user consumption.

Information from one business process should match with information

from another. If two performance measures have the same name, then they

must mean the same thing. Conversely, if two measures don’t mean the

same thing, then they should be labeled differently. Consistent information

means high-quality information. It means that all the data is accounted for

and complete. Consistency also implies that common definitions for the

contents of the data warehouse are available for users.

The data warehouse must be adaptive and resilient to change. We simply

can’t avoid change. User needs, business conditions, data, and technology

are all subject to the shifting sands of time. The data warehouse must be

designed to handle this inevitable change. Changes to the data warehouse

should be graceful, meaning that they don’t invalidate existing data or

applications. The existing data and applications should not be changed or

disrupted when the business community asks new questions or new data

is added to the warehouse. If descriptive data in the warehouse is modi-

fied, we must account for the changes appropriately.

Dimensional Modeling Primer 3

The data warehouse must be a secure bastion that protects our information

assets. An organization’s informational crown jewels are stored in the data

warehouse. At a minimum, the warehouse likely contains information

about what we’re selling to whom at what price—potentially harmful

details in the hands of the wrong people. The data warehouse must effec-

tively control access to the organization’s confidential information.

The data warehouse must serve as the foundation for improved decision

making. The data warehouse must have the right data in it to support deci-

sion making. There is only one true output from a data warehouse: the deci-

sions that are made after the data warehouse has presented its evidence.

These decisions deliver the business impact and value attributable to the

warehouse. The original label that predates the data warehouse is still the

best description of what we are designing: a decision support system.

The business community must accept the data warehouse if it is to be

deemed successful. It doesn’t matter that we’ve built an elegant solution

using best-of-breed products and platforms. If the business community has

not embraced the data warehouse and continued to use it actively six

months after training, then we have failed the acceptance test. Unlike an

operational system rewrite, where business users have no choice but to use

the new system, data warehouse usage is sometimes optional. Business

user acceptance has more to do with simplicity than anything else.

As this list illustrates, successful data warehousing demands much more than

being a stellar DBA or technician. With a data warehousing initiative, we have

one foot in our information technology (IT) comfort zone, while our other foot

is on the unfamiliar turf of business users. We must straddle the two, modify-

ing some of our tried-and-true skills to adapt to the unique demands of data

warehousing. Clearly, we need to bring a bevy of skills to the party to behave

like we’re a hybrid DBA/MBA.

The Publishing Metaphor

With the goals of the data warehouse as a backdrop, let’s compare our respon-

sibilities as data warehouse managers with those of a publishing editor-in-

chief. As the editor of a high-quality magazine, you would be given broad

latitude to manage the magazine’s content, style, and delivery. Anyone with

this job title likely would tackle the following activities:

■■ Identify your readers demographically.

■■ Find out what the readers want in this kind of magazine.

■■ Identify the “best” readers who will renew their subscriptions and buy

products from the magazine’s advertisers.

CHAPTER 1

4

■■ Find potential new readers and make them aware of the magazine.

■■ Choose the magazine content most appealing to the target readers.

■■ Make layout and rendering decisions that maximize the readers’ pleasure.

■■ Uphold high quality writing and editing standards, while adopting a

consistent presentation style.

■■ Continuously monitor the accuracy of the articles and advertiser’s claims.

■■ Develop a good network of writers and contributors as you gather new

input to the magazine’s content from a variety of sources.

■■ Attract advertising and run the magazine profitably.

■■ Publish the magazine on a regular basis.

■■ Maintain the readers’ trust.

■■ Keep the business owners happy.

We also can identify items that should be nongoals for the magazine editor-in-

chief. These would include such things as building the magazine around the

technology of a particular printing press, putting management’s energy into

operational efficiencies exclusively, imposing a technical writing style that

readers don’t easily understand, or creating an intricate and crowded layout

that is difficult to peruse and read.

By building the publishing business on a foundation of serving the readers

effectively, your magazine is likely to be successful. Conversely, go through

the list and imagine what happens if you omit any single item; ultimately, your

magazine would have serious problems.

The point of this metaphor, of course, is to draw the parallel between being a

conventional publisher and being a data warehouse manager. We are con-

vinced that the correct job description for a data warehouse manager is pub-

lisher of the right data. Driven by the needs of the business, data warehouse

managers are responsible for publishing data that has been collected from a

variety of sources and edited for quality and consistency. Your main responsi-

bility as a data warehouse manager is to serve your readers, otherwise known

as business users. The publishing metaphor underscores the need to focus out-

ward to your customers rather than merely focusing inward on products and

processes. While you will use technology to deliver your data warehouse, the

technology is at best a means to an end. As such, the technology and tech-

niques you use to build your data warehouses should not appear directly in

your top job responsibilities.

Let’s recast the magazine publisher’s responsibilities as data warehouse man-

ager responsibilities:

Dimensional Modeling Primer 5

■■ Understand your users by business area, job responsibilities, and com-

puter tolerance.

■■ Determine the decisions the business users want to make with the help of

the data warehouse.

■■ Identify the “best” users who make effective, high-impact decisions using

the data warehouse.

■■ Find potential new users and make them aware of the data warehouse.

■■ Choose the most effective, actionable subset of the data to present in the

data warehouse, drawn from the vast universe of possible data in your

organization.

■■ Make the user interfaces and applications simple and template-driven,

explicitly matching to the users’ cognitive processing profiles.

■■ Make sure the data is accurate and can be trusted, labeling it consistently

across the enterprise.

■■ Continuously monitor the accuracy of the data and the content of the

delivered reports.

■■ Search for new data sources, and continuously adapt the data warehouse

to changing data profiles, reporting requirements, and business priorities.

■■ Take a portion of the credit for the business decisions made using the data

warehouse, and use these successes to justify your staffing, software, and

hardware expenditures.

■■ Publish the data on a regular basis.

■■ Maintain the trust of business users.

■■ Keep your business users, executive sponsors, and boss happy.

If you do a good job with all these responsibilities, you will be a great data

warehouse manager! Conversely, go down through the list and imagine what

happens if you omit any single item. Ultimately, your data warehouse would

have serious problems. We urge you to contrast this view of a data warehouse

manager’s job with your own job description. Chances are the preceding list is

much more oriented toward user and business issues and may not even sound

like a job in IT. In our opinion, this is what makes data warehousing interesting.

Components of a Data Warehouse

Now that we understand the goals of a data warehouse, let’s investigate the

components that make up a complete warehousing environment. It is helpful

to understand the pieces carefully before we begin combining them to create a

CHAPTER 1

6

TEAMFLY

Team-Fly®

data warehouse. Each warehouse component serves a specific function. We

need to learn the strategic significance of each component and how to wield it

effectively to win the data warehousing game. One of the biggest threats to

data warehousing success is confusing the components’ roles and functions.

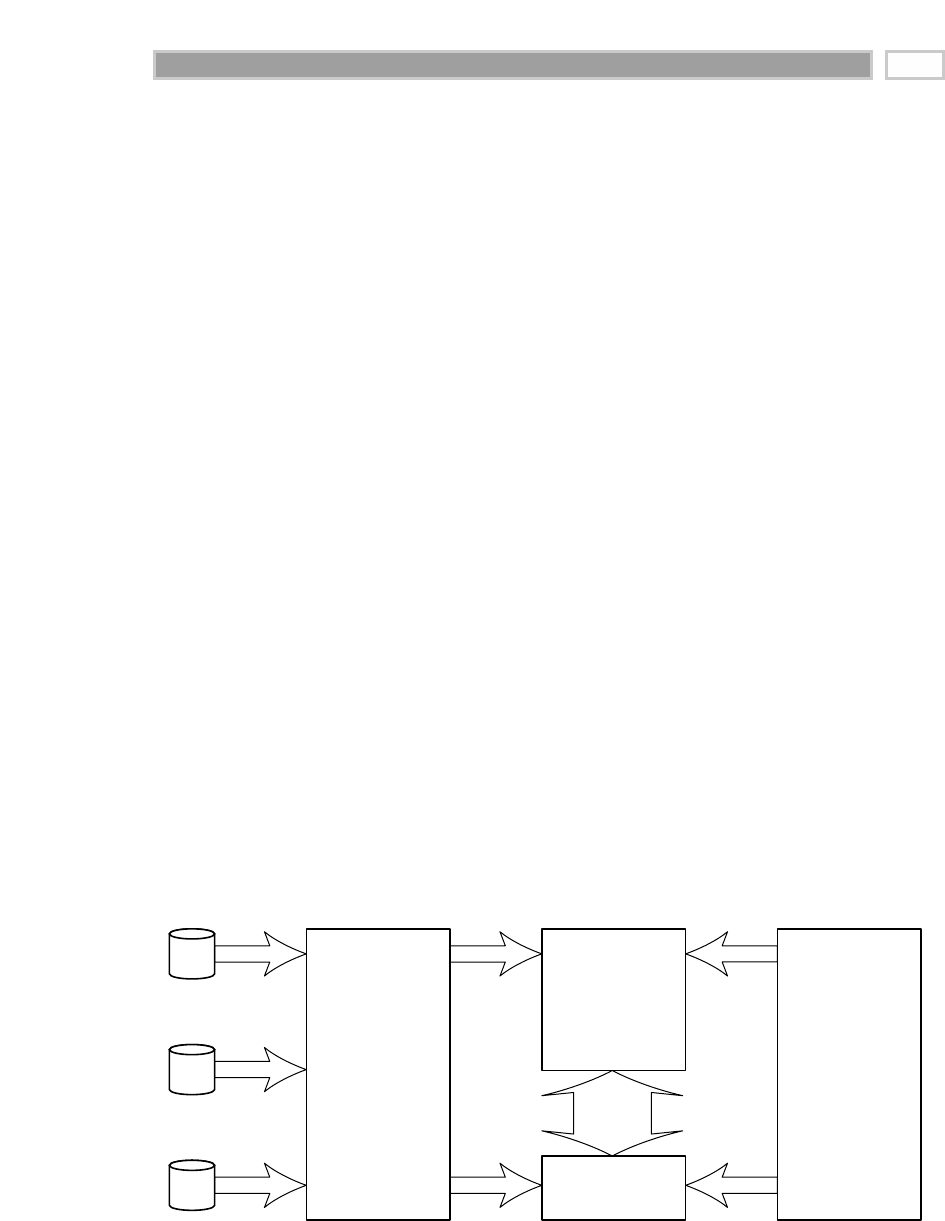

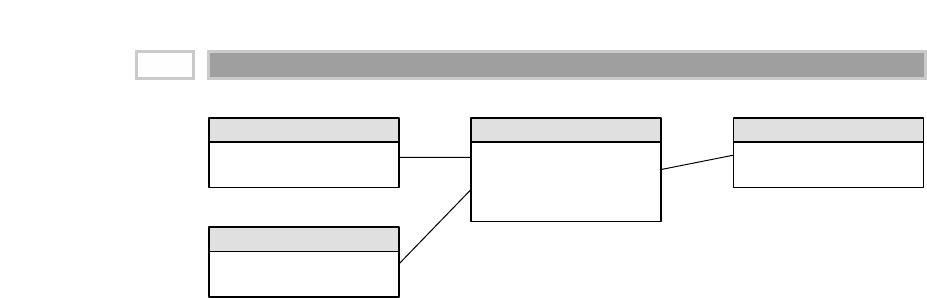

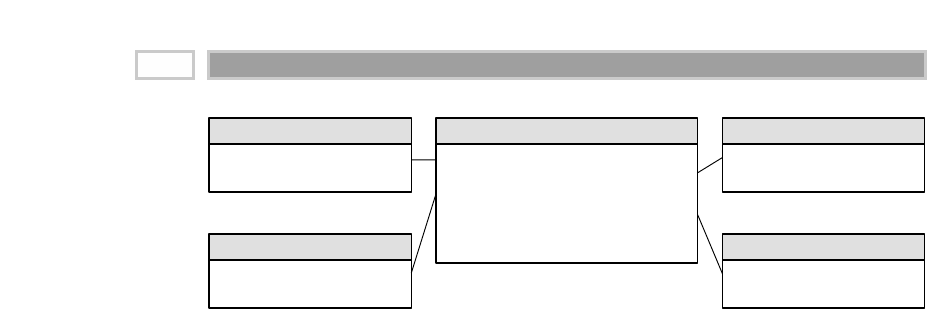

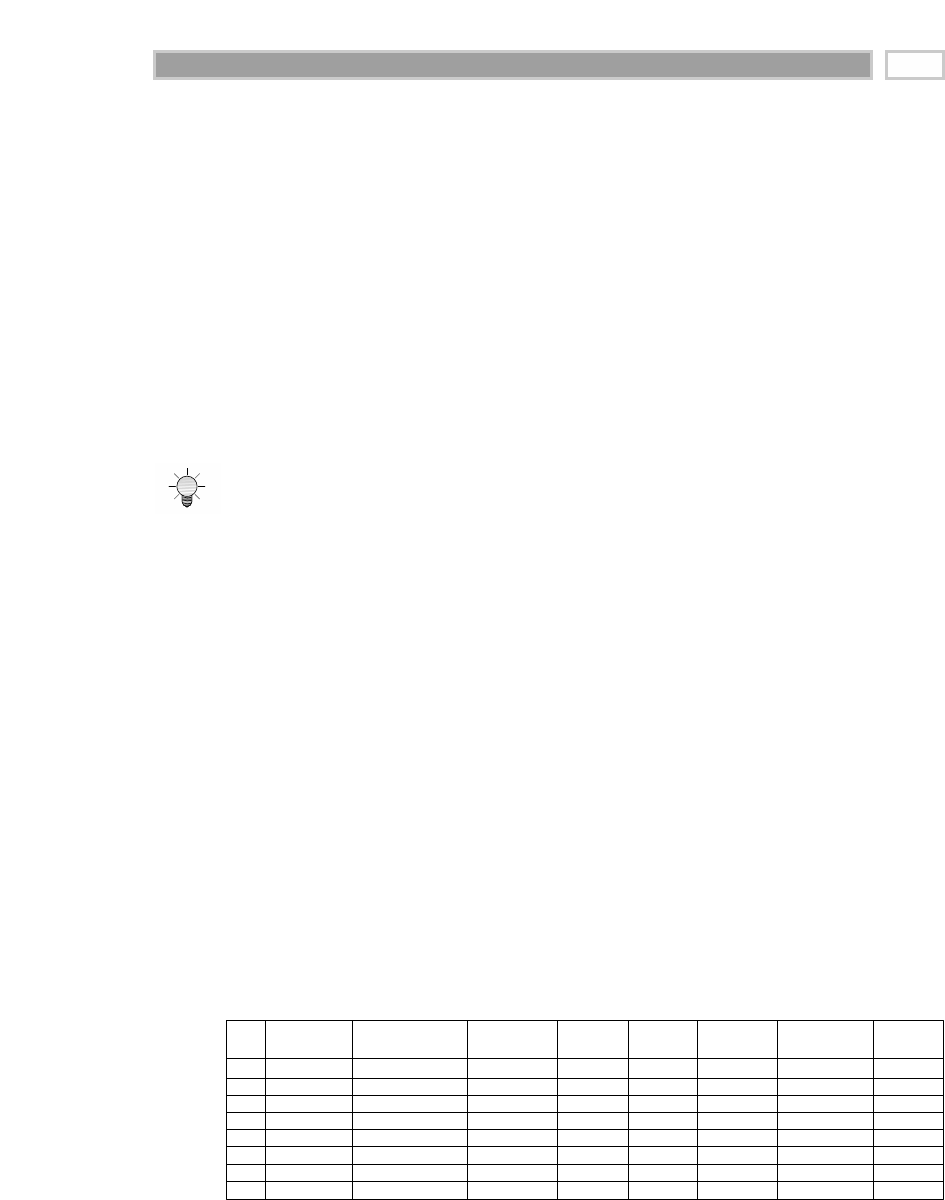

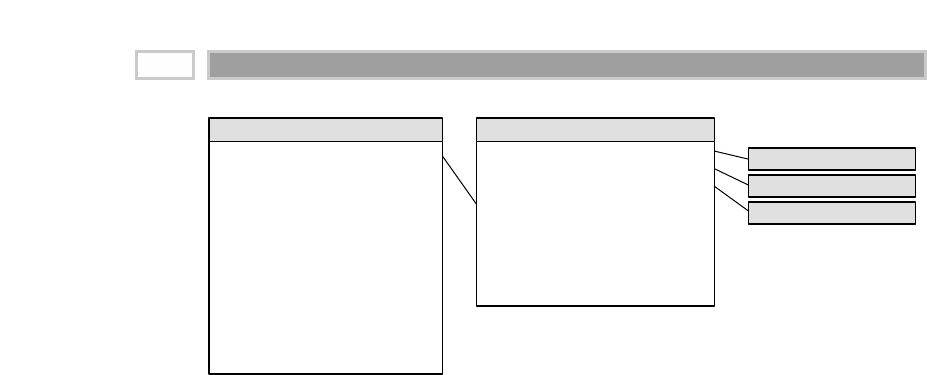

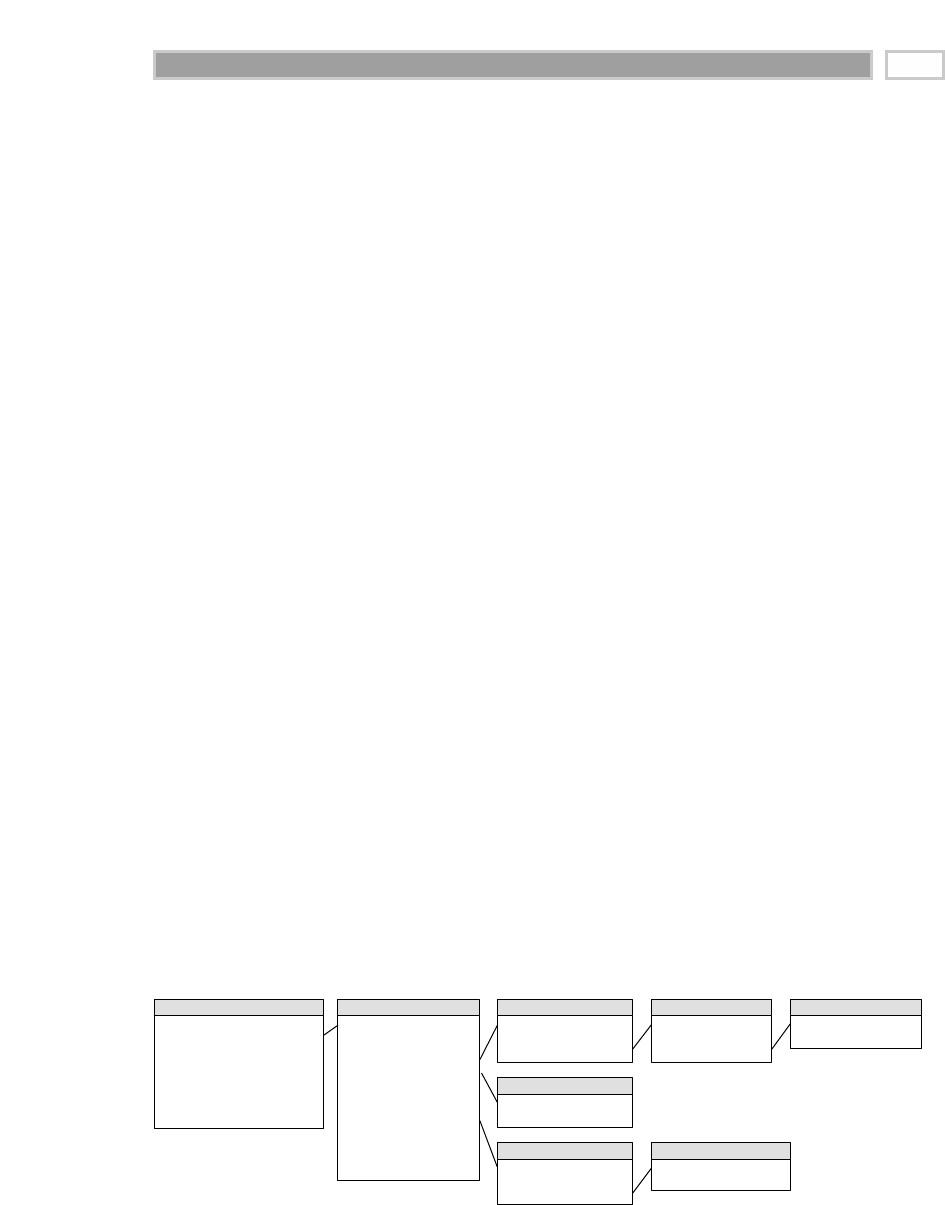

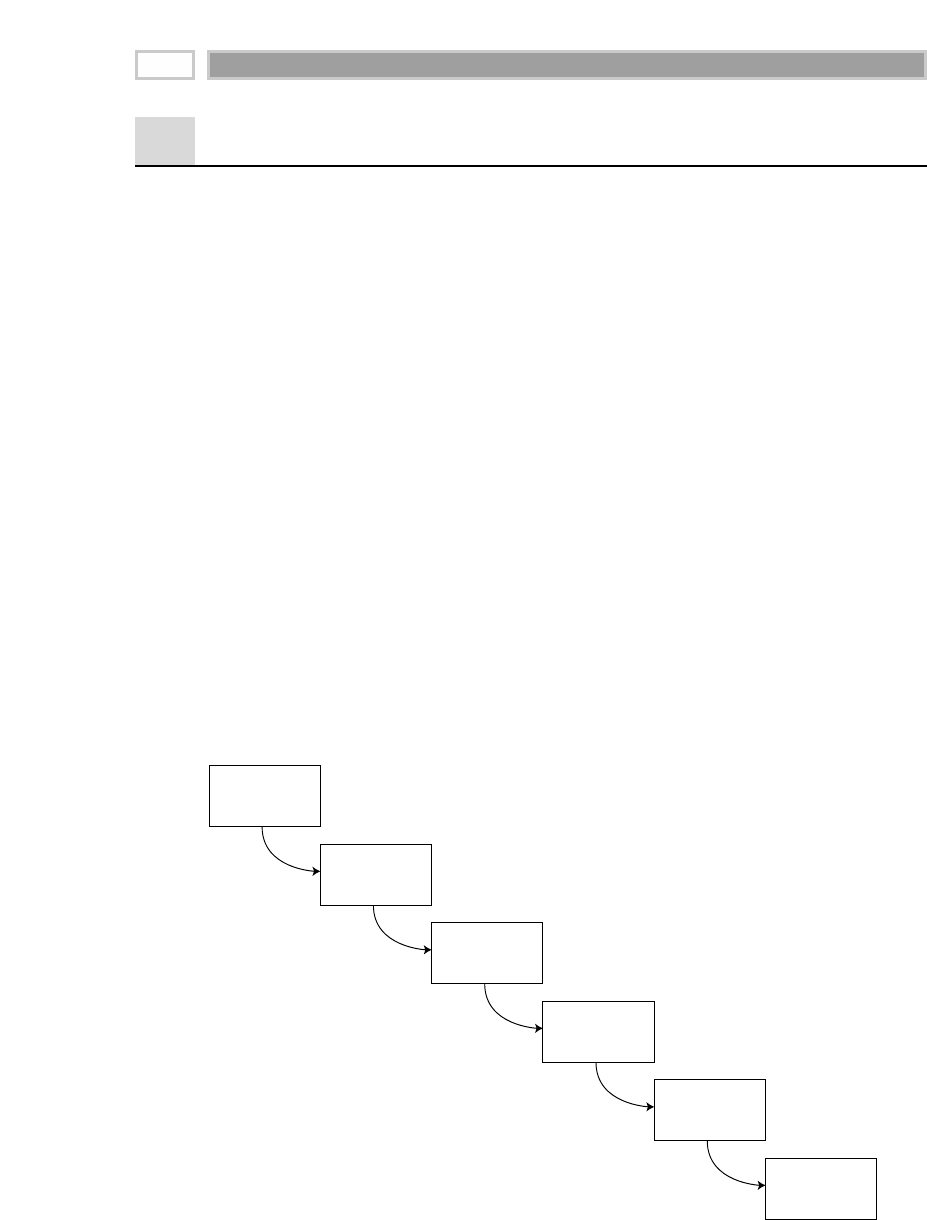

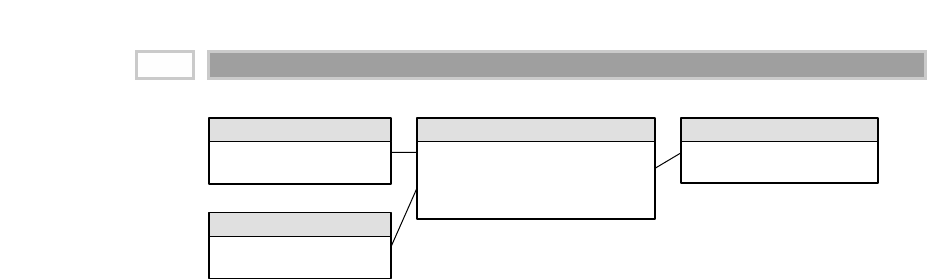

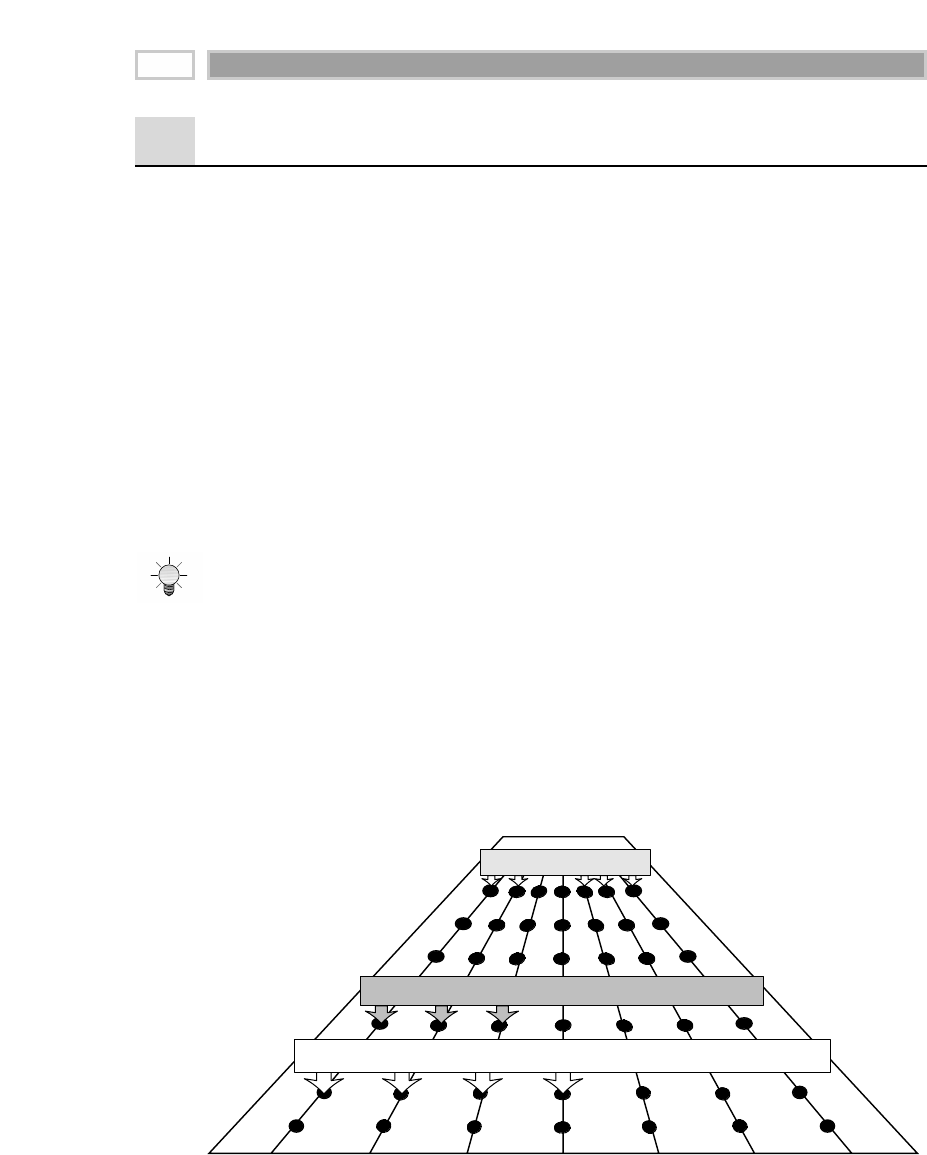

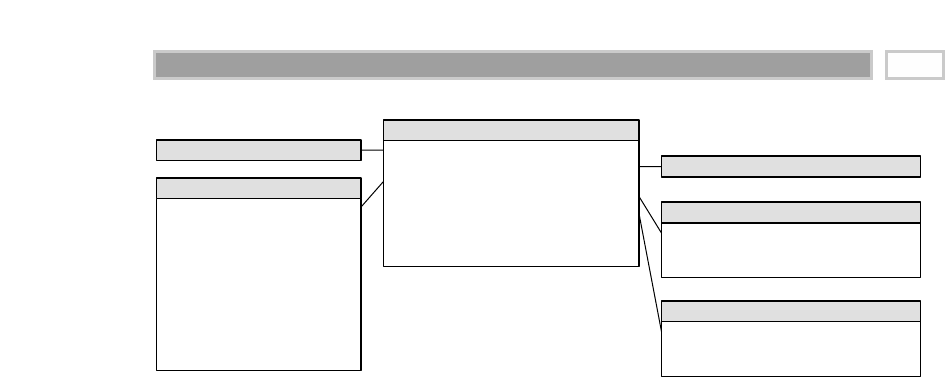

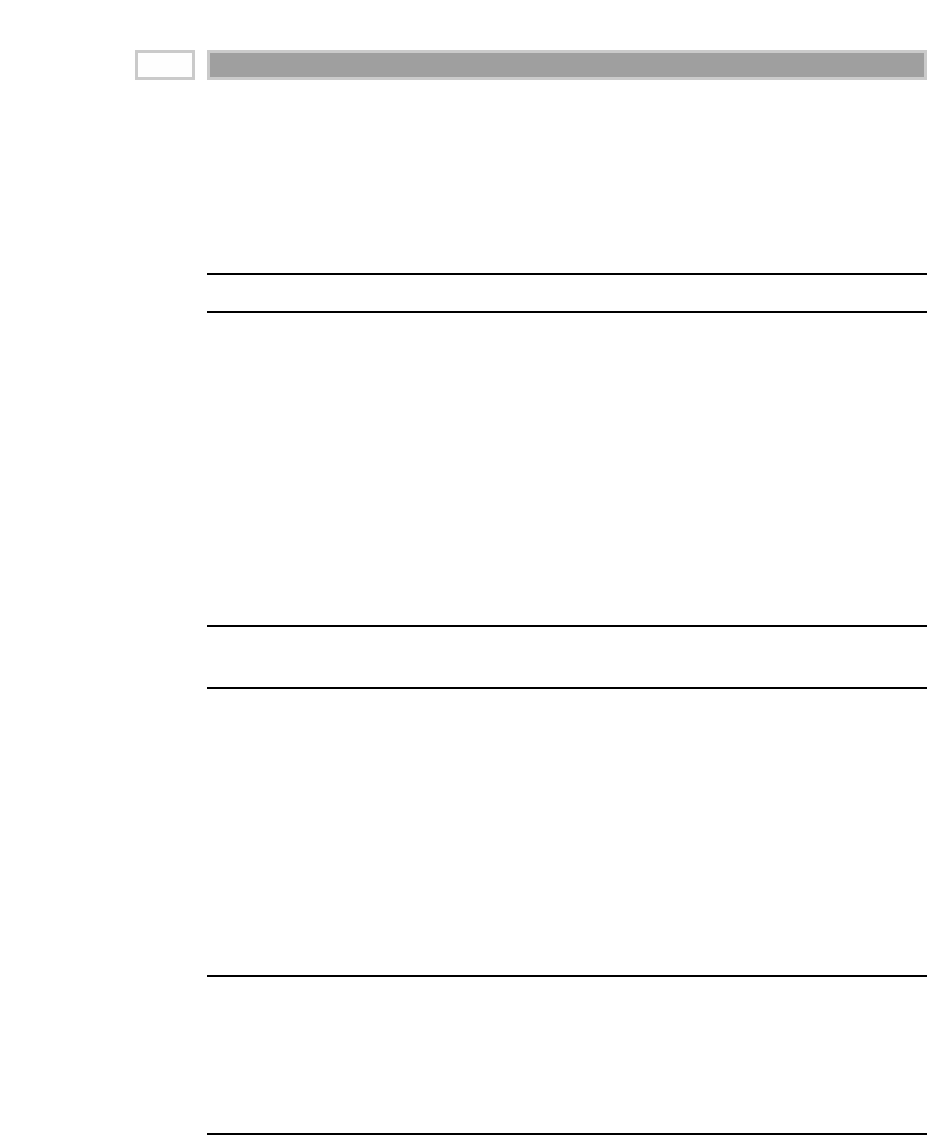

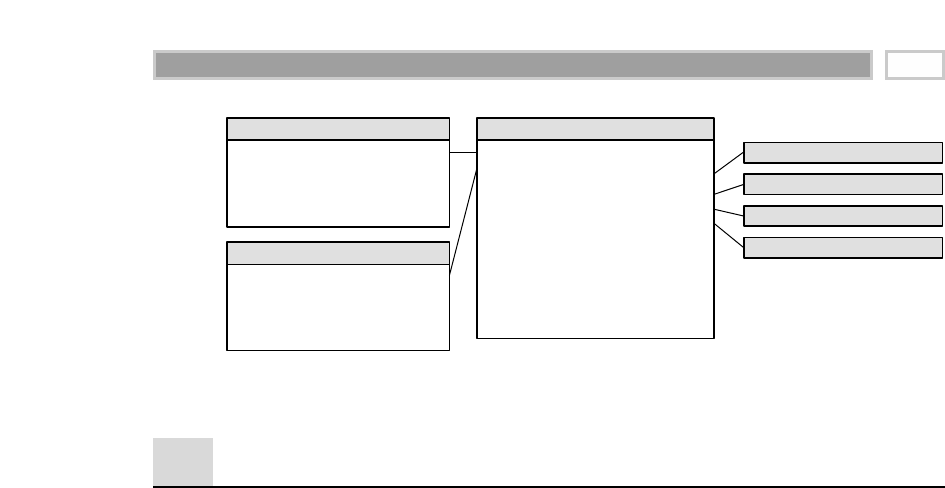

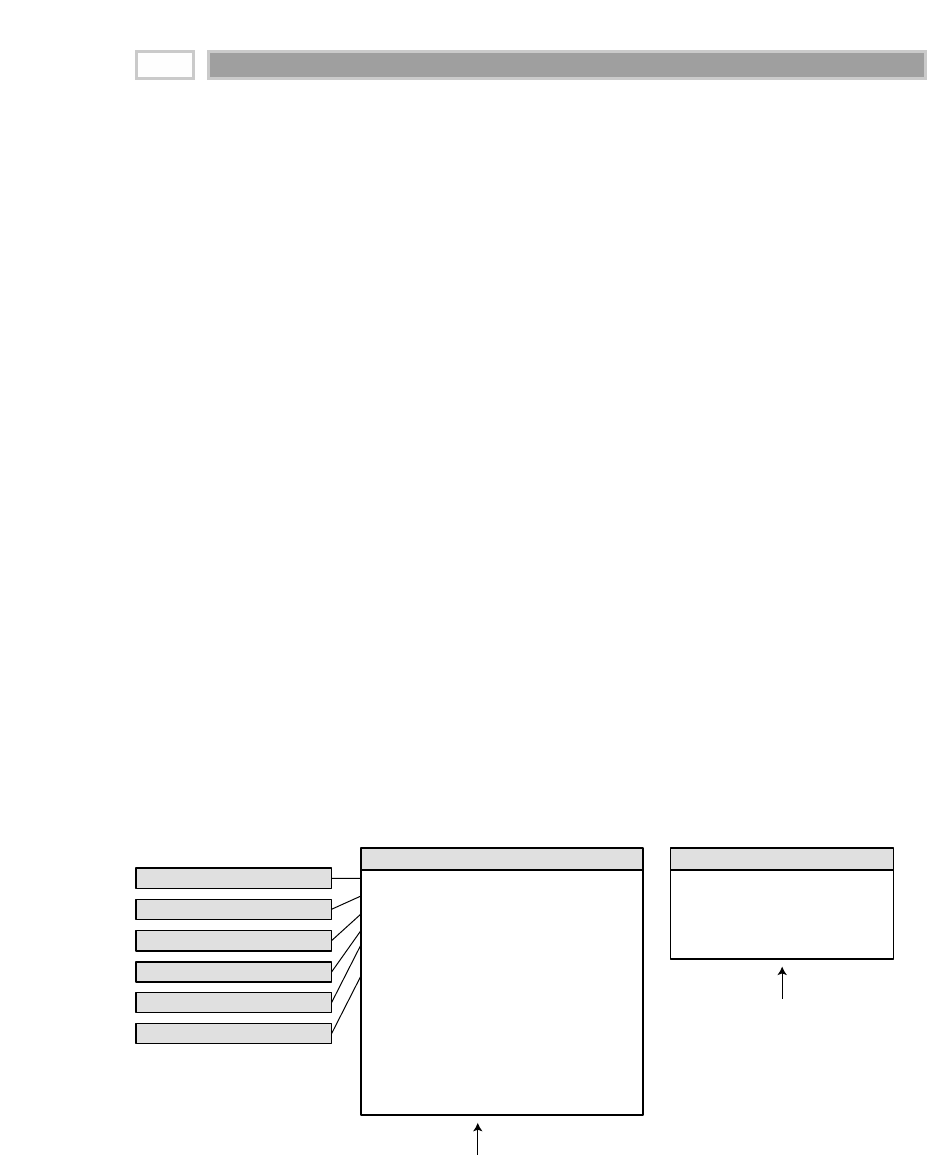

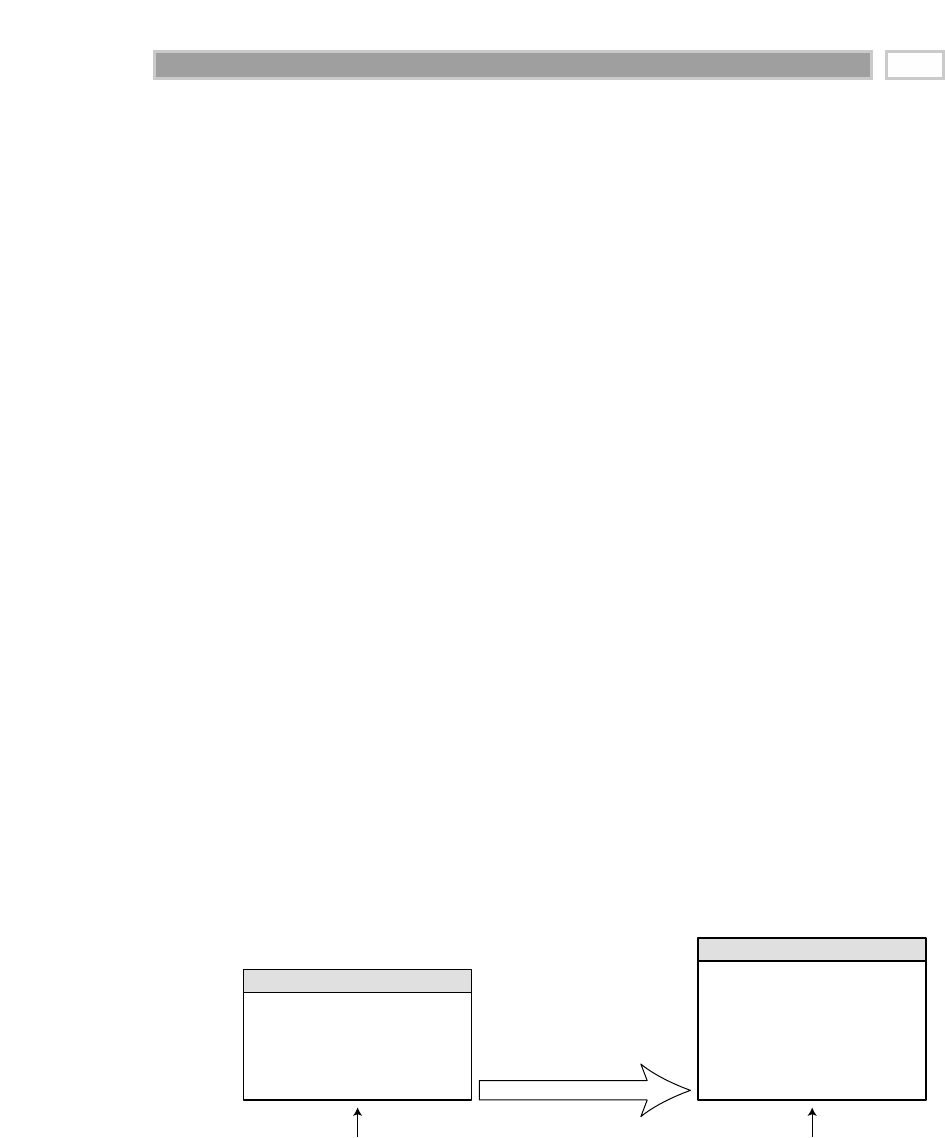

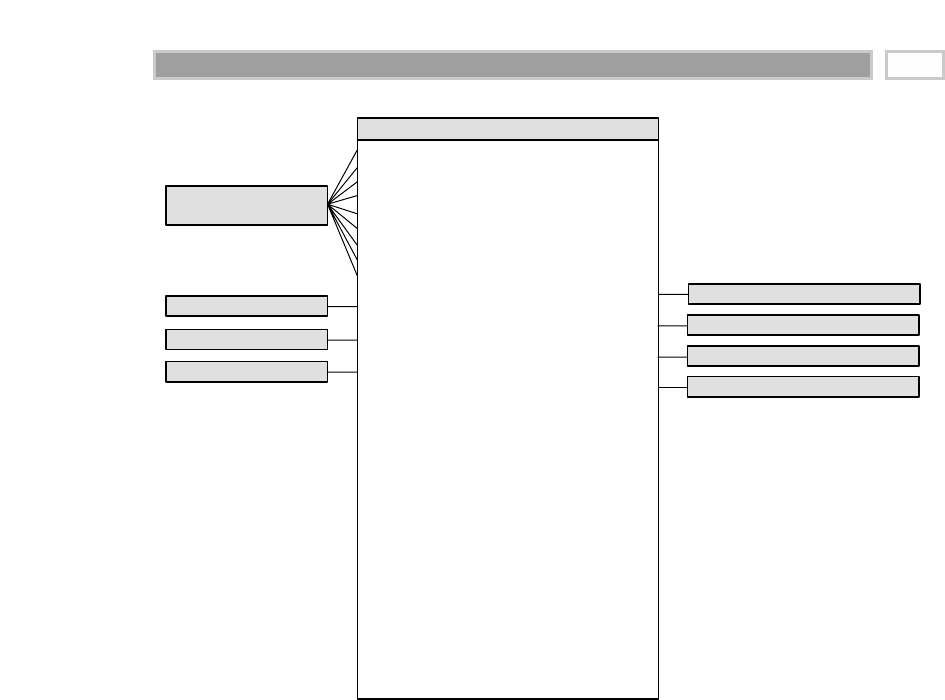

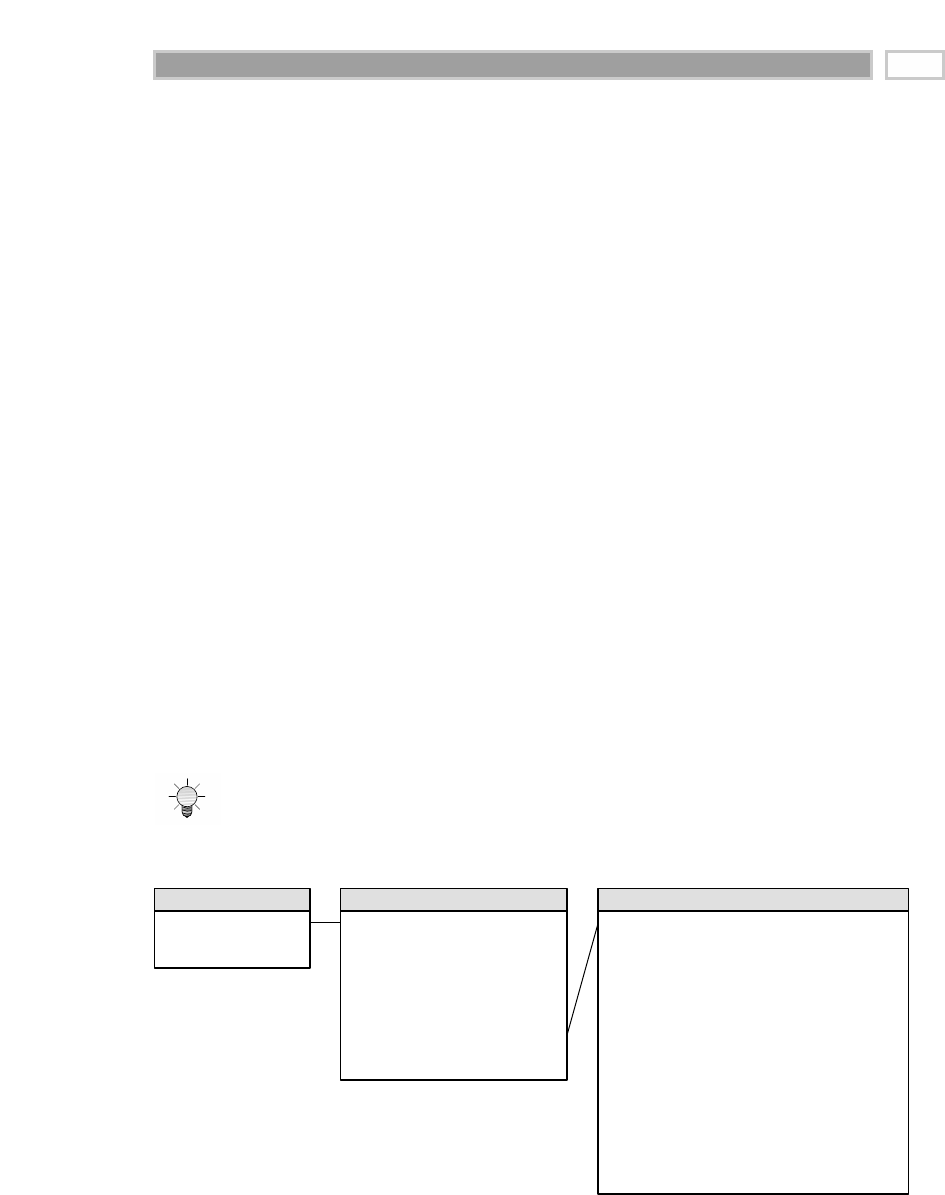

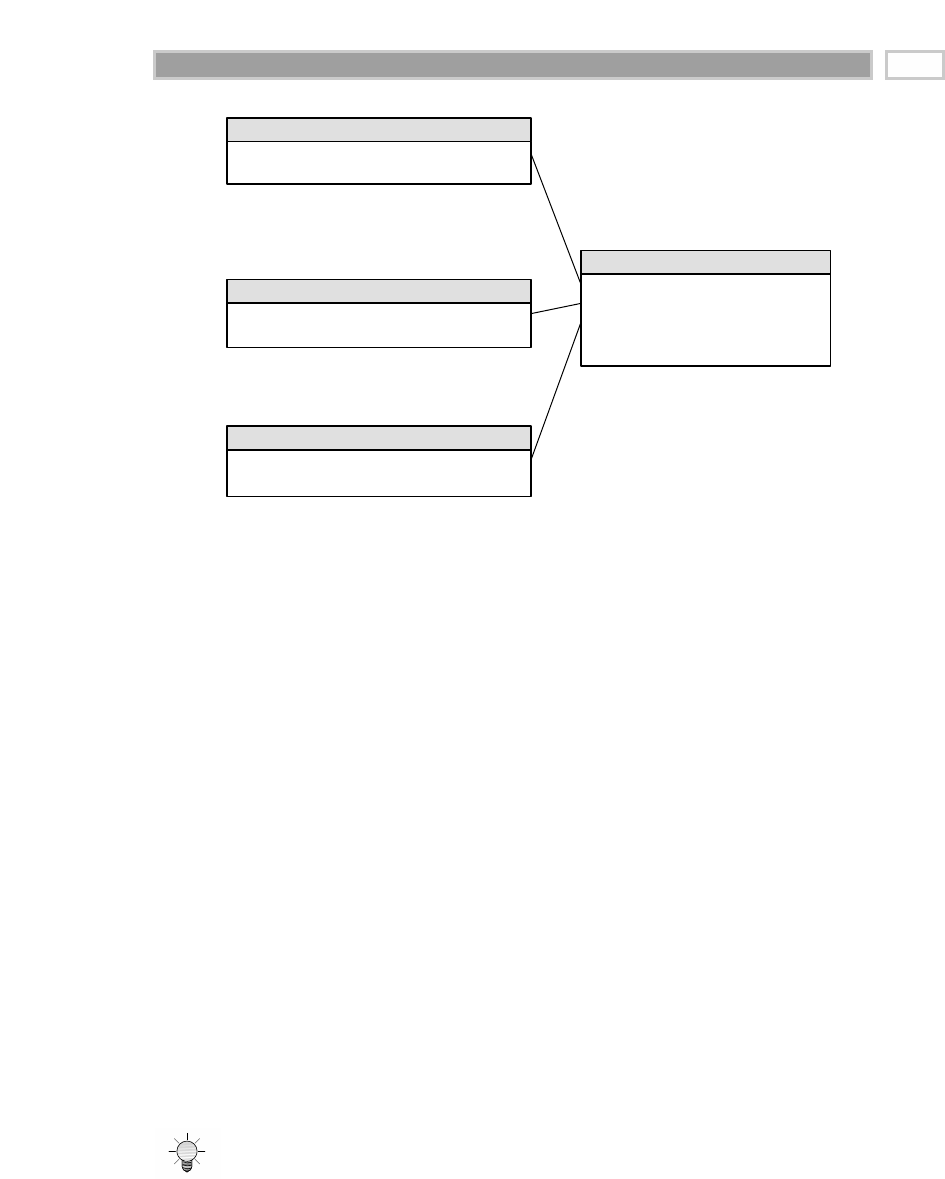

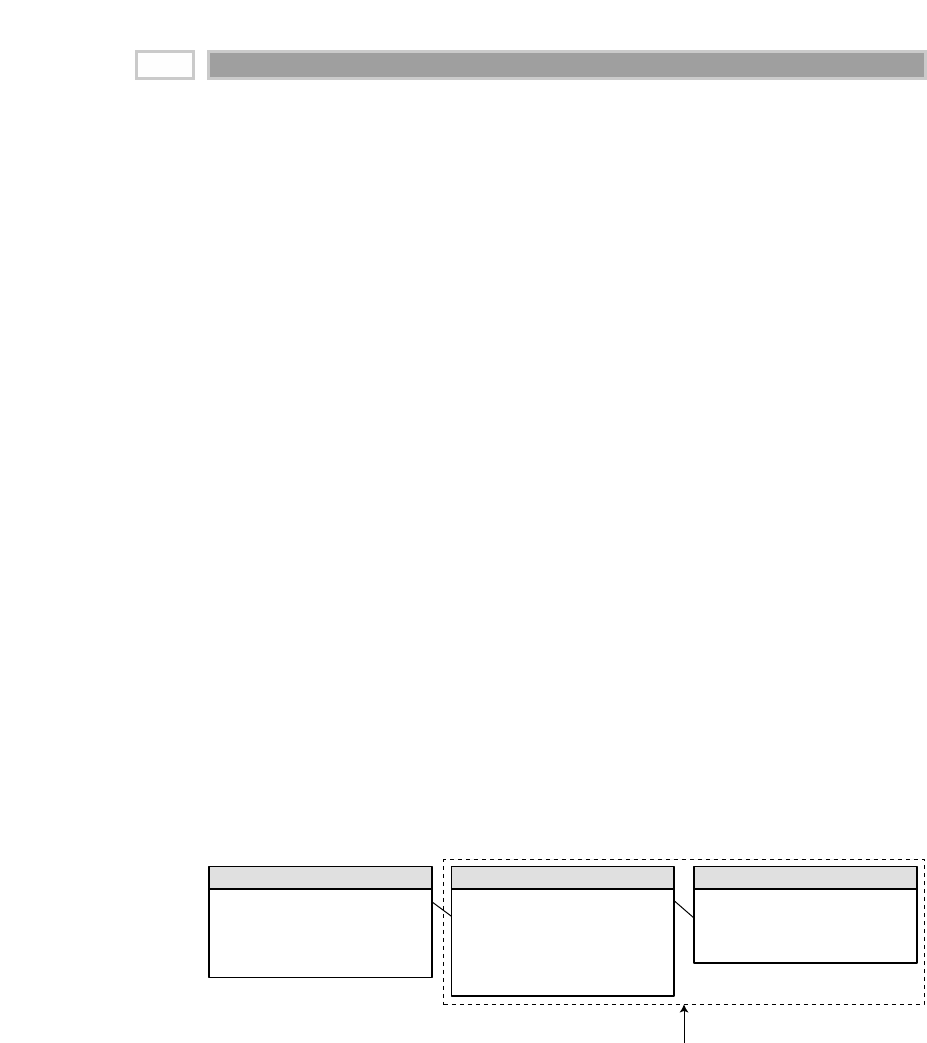

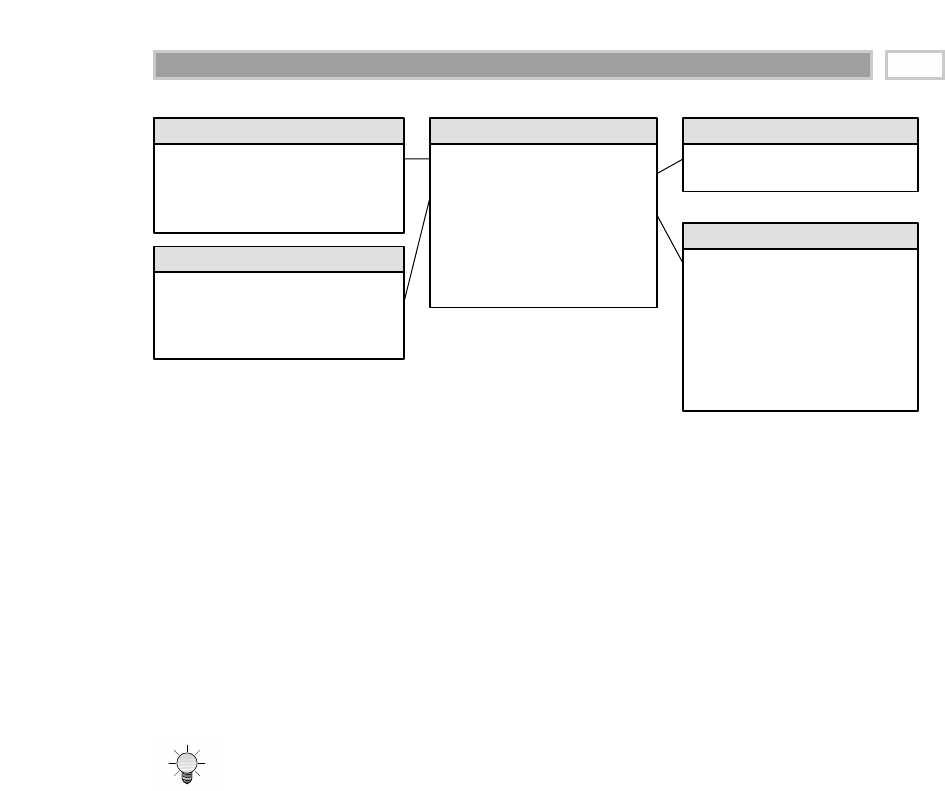

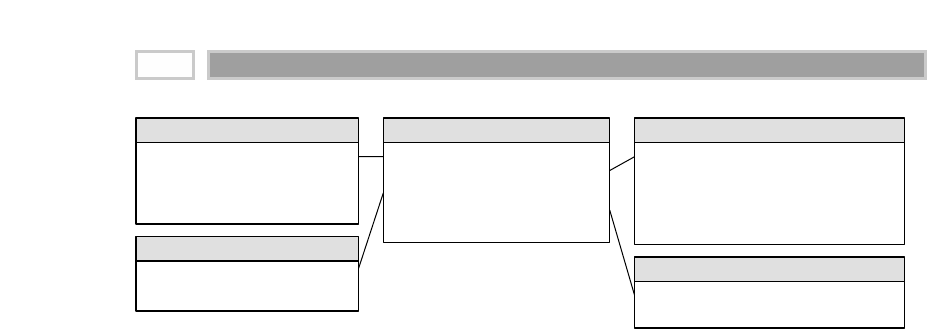

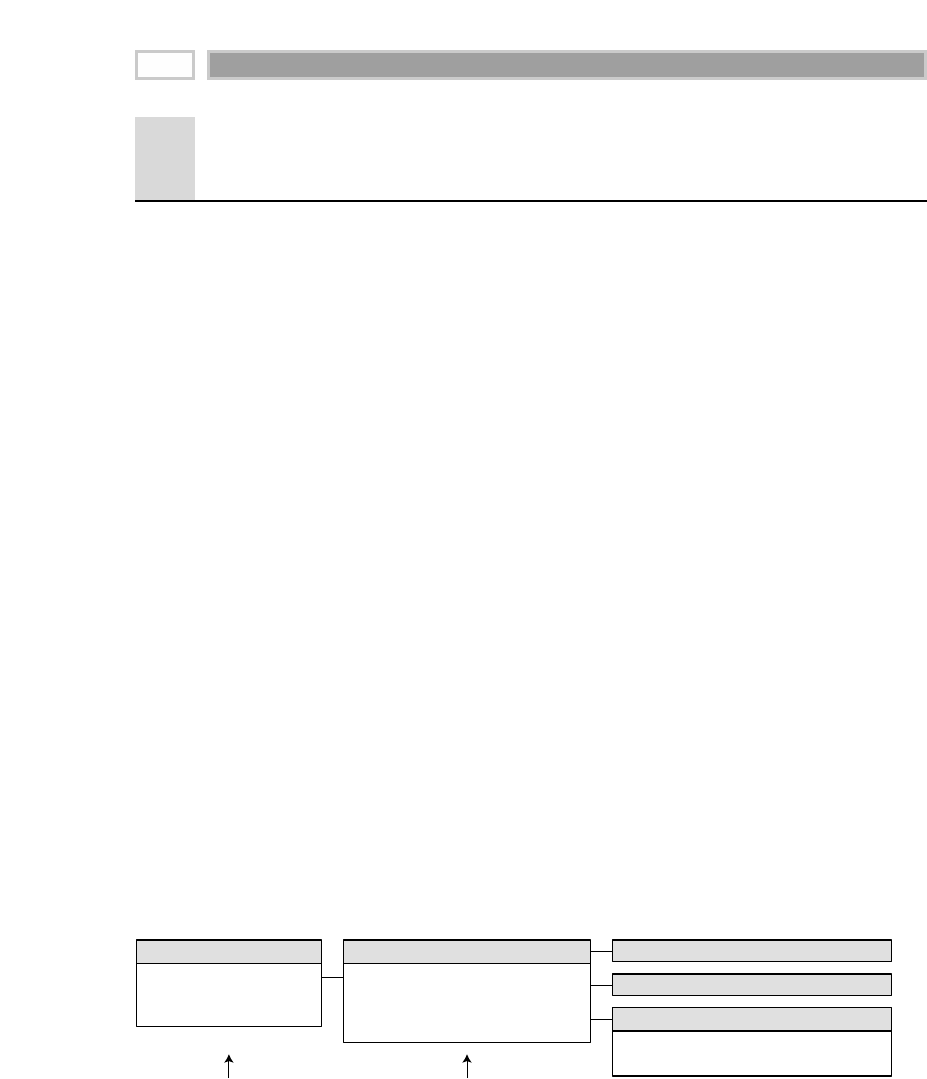

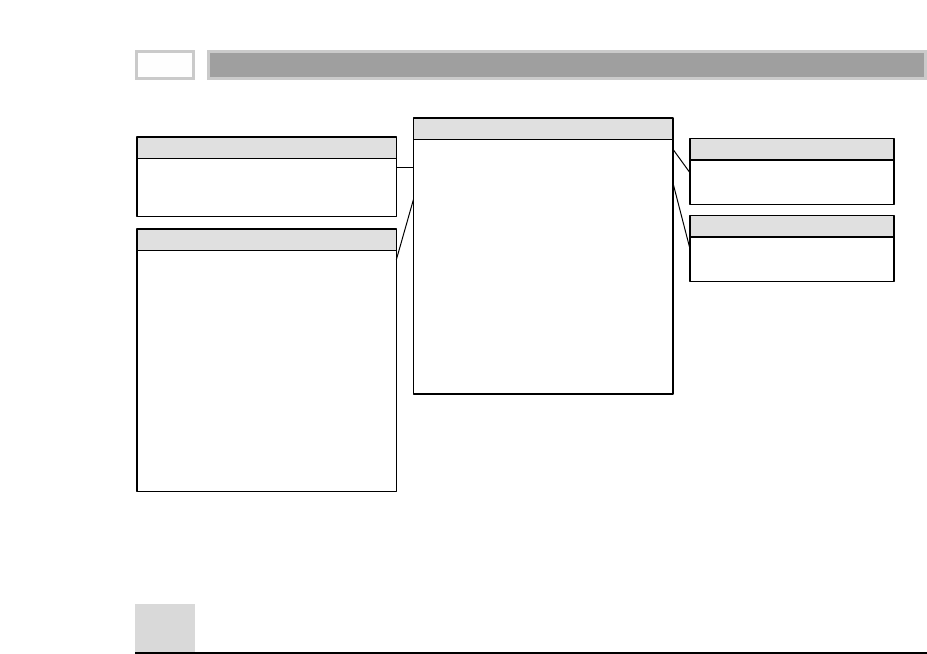

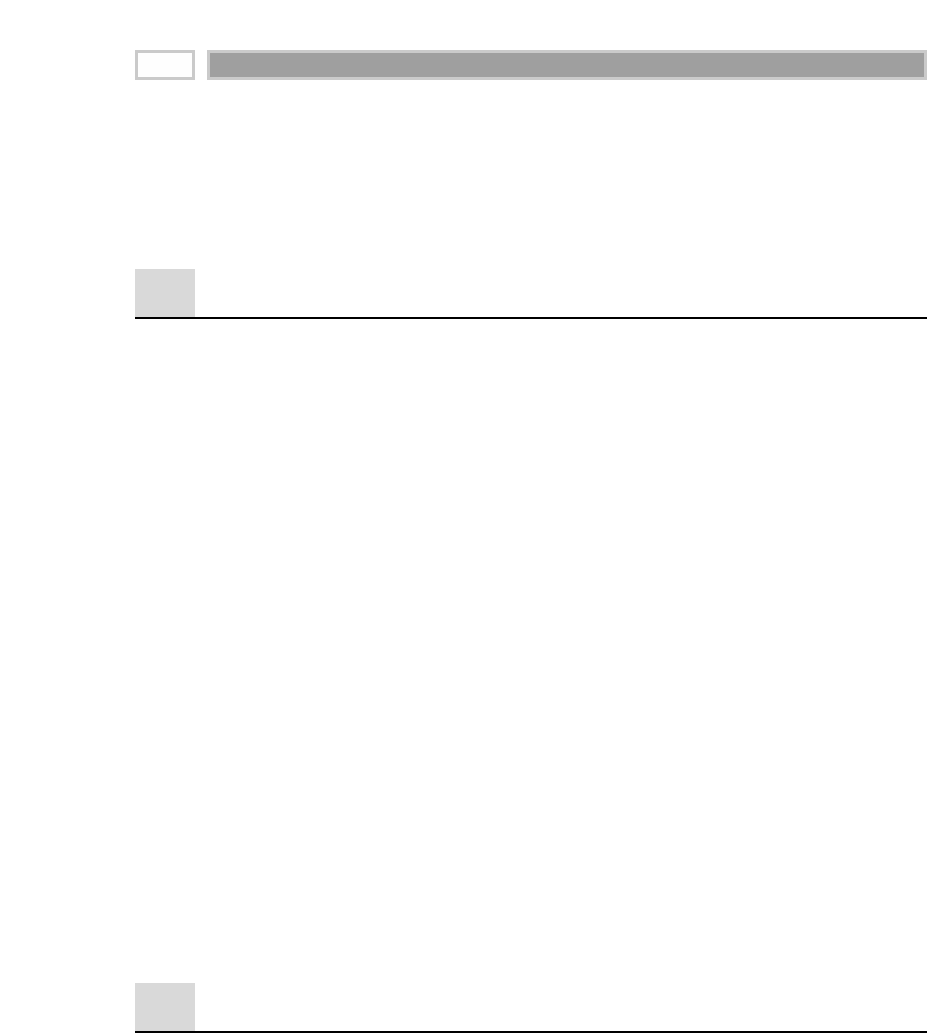

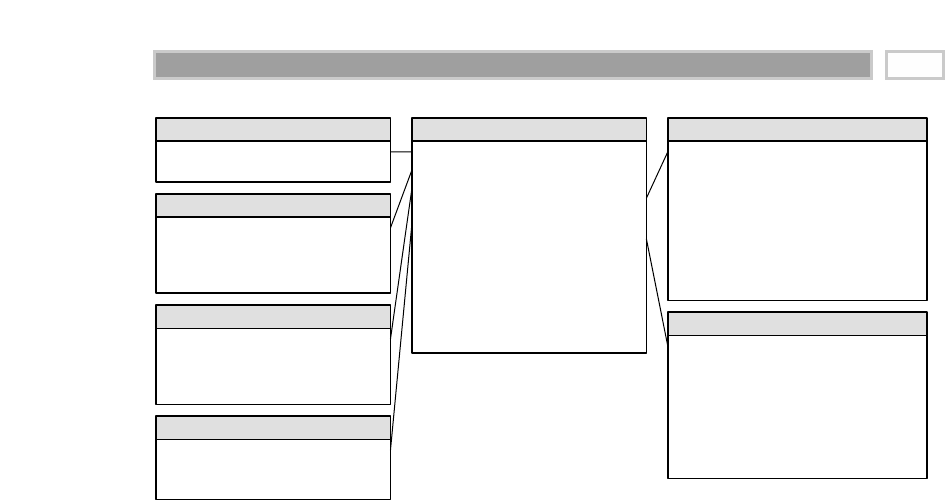

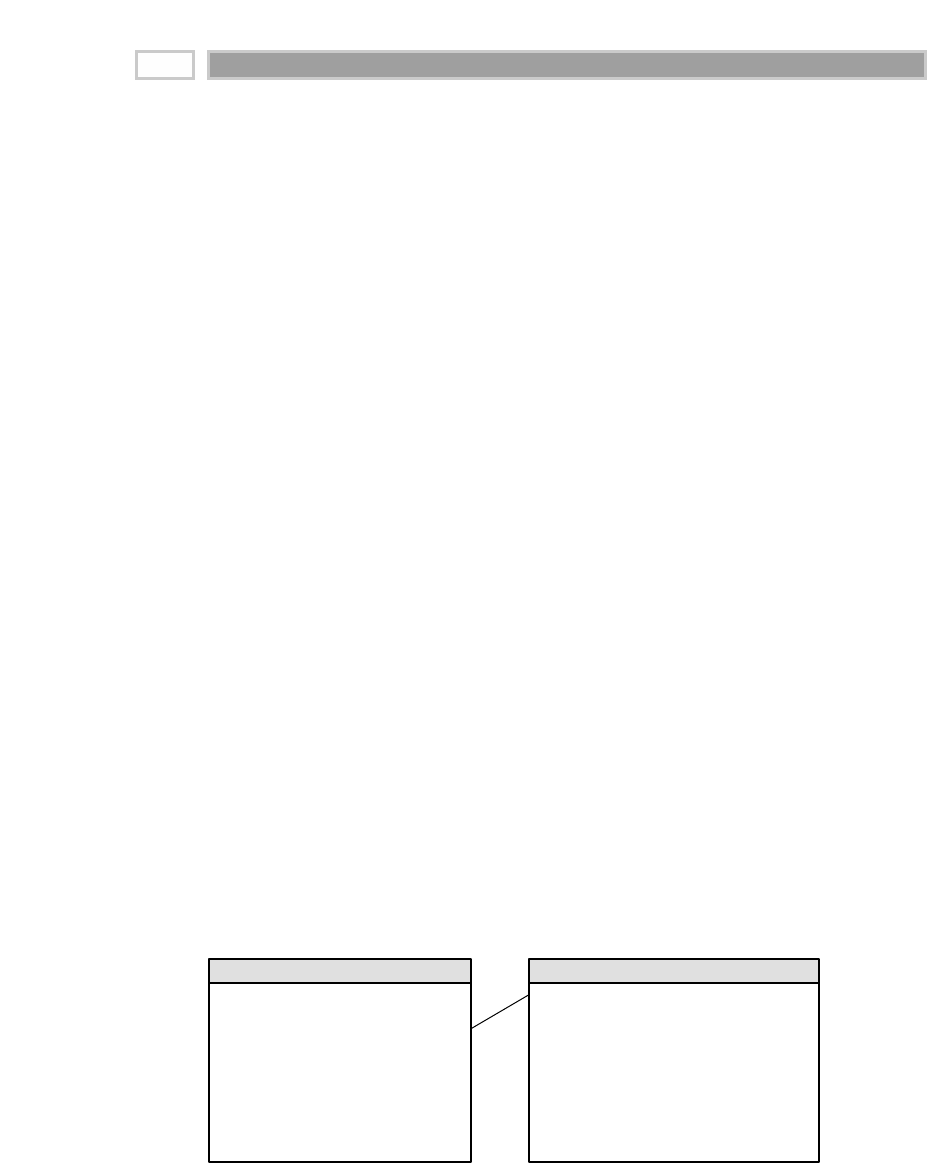

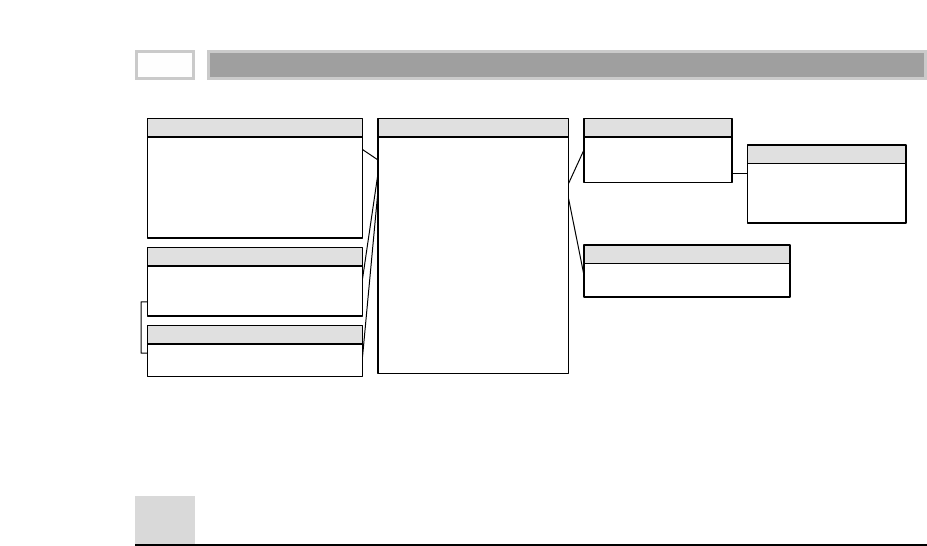

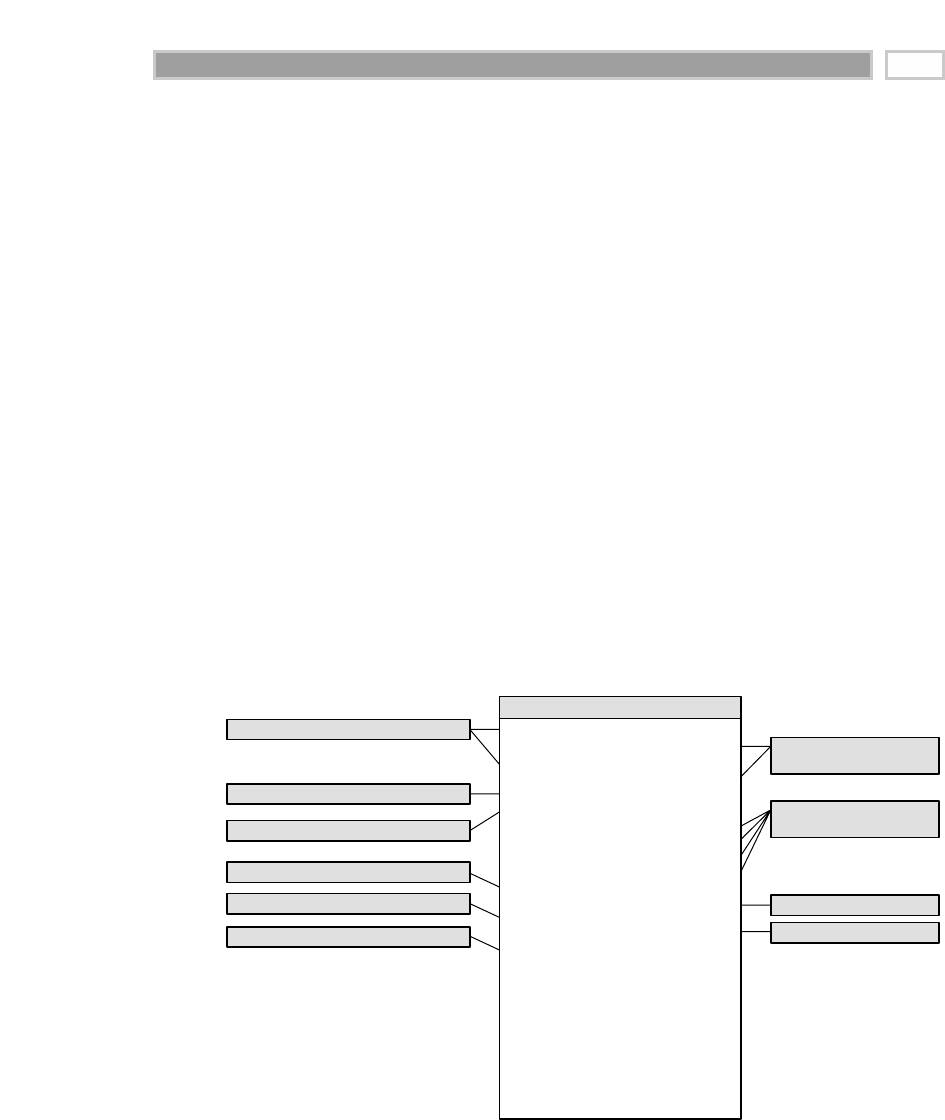

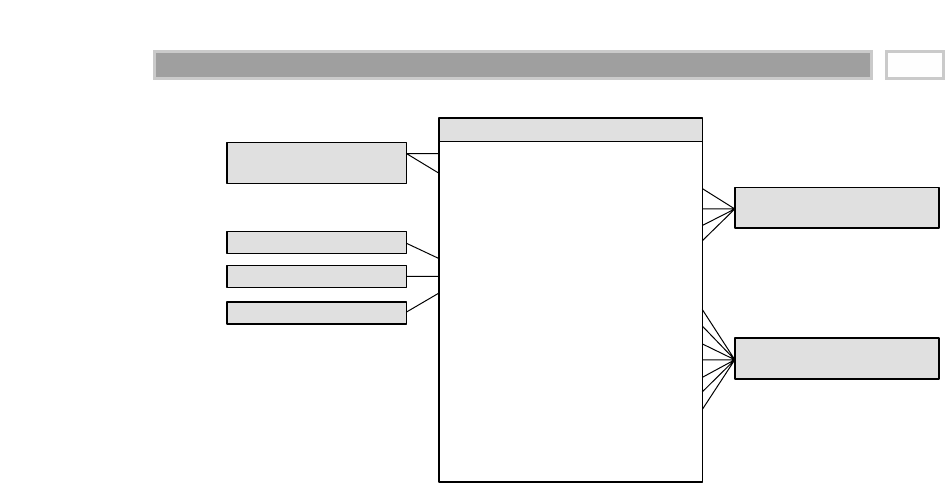

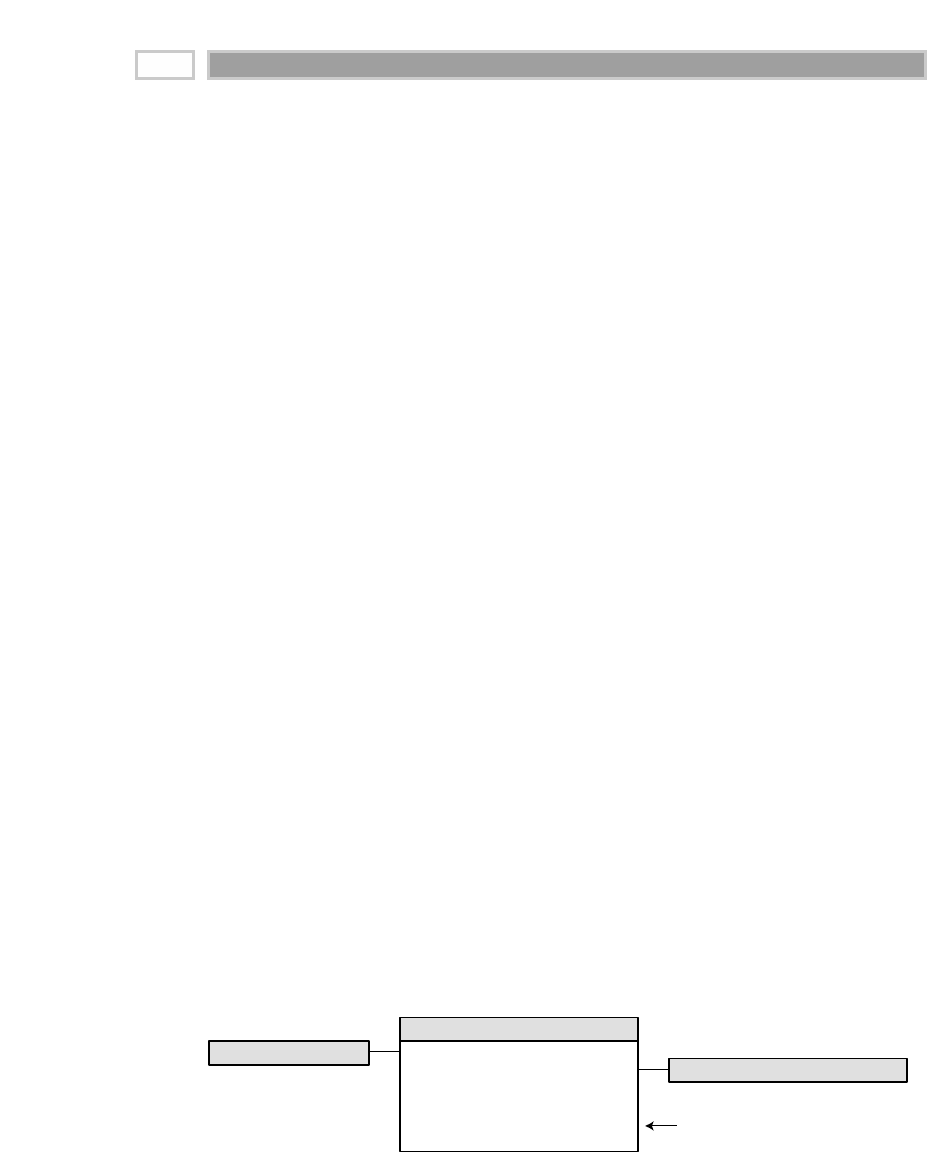

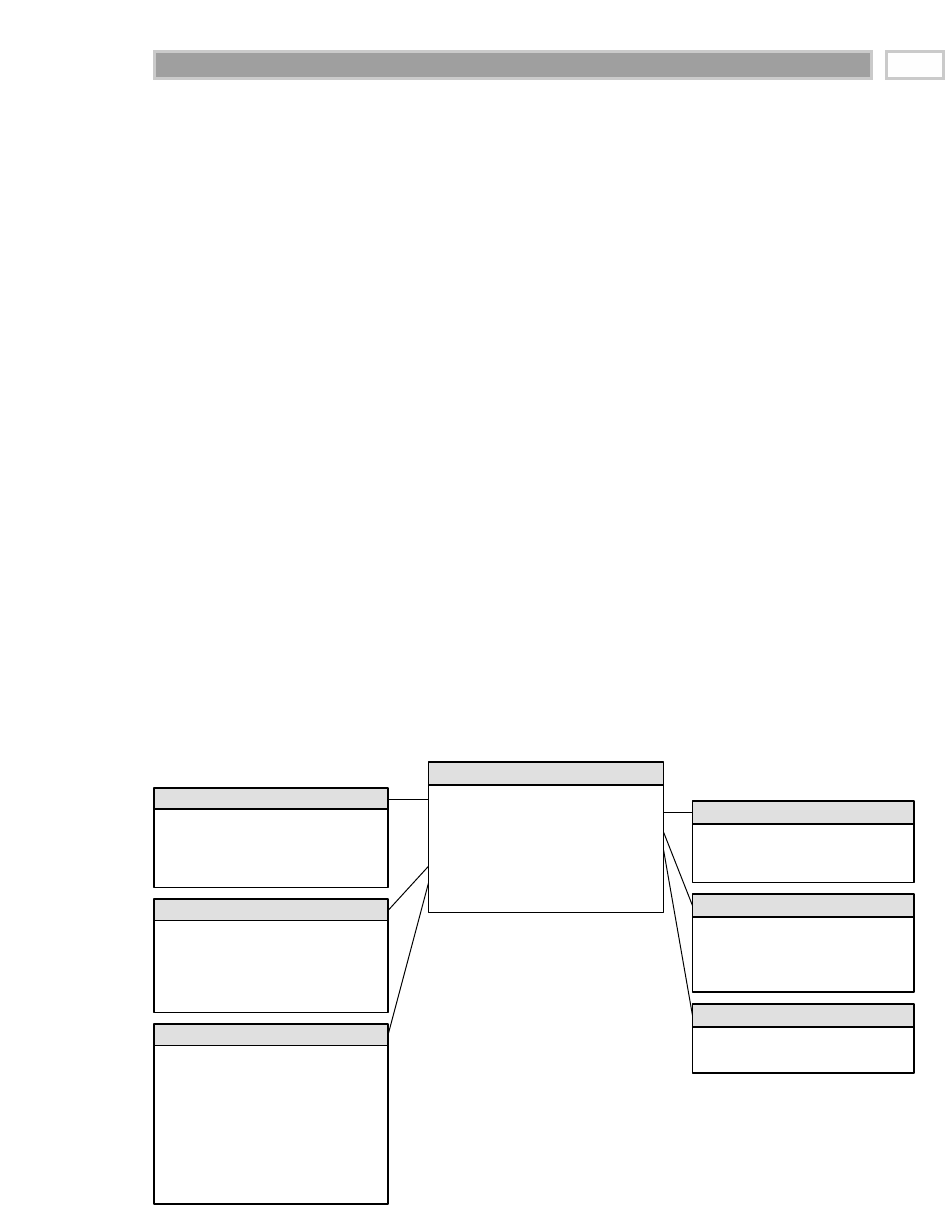

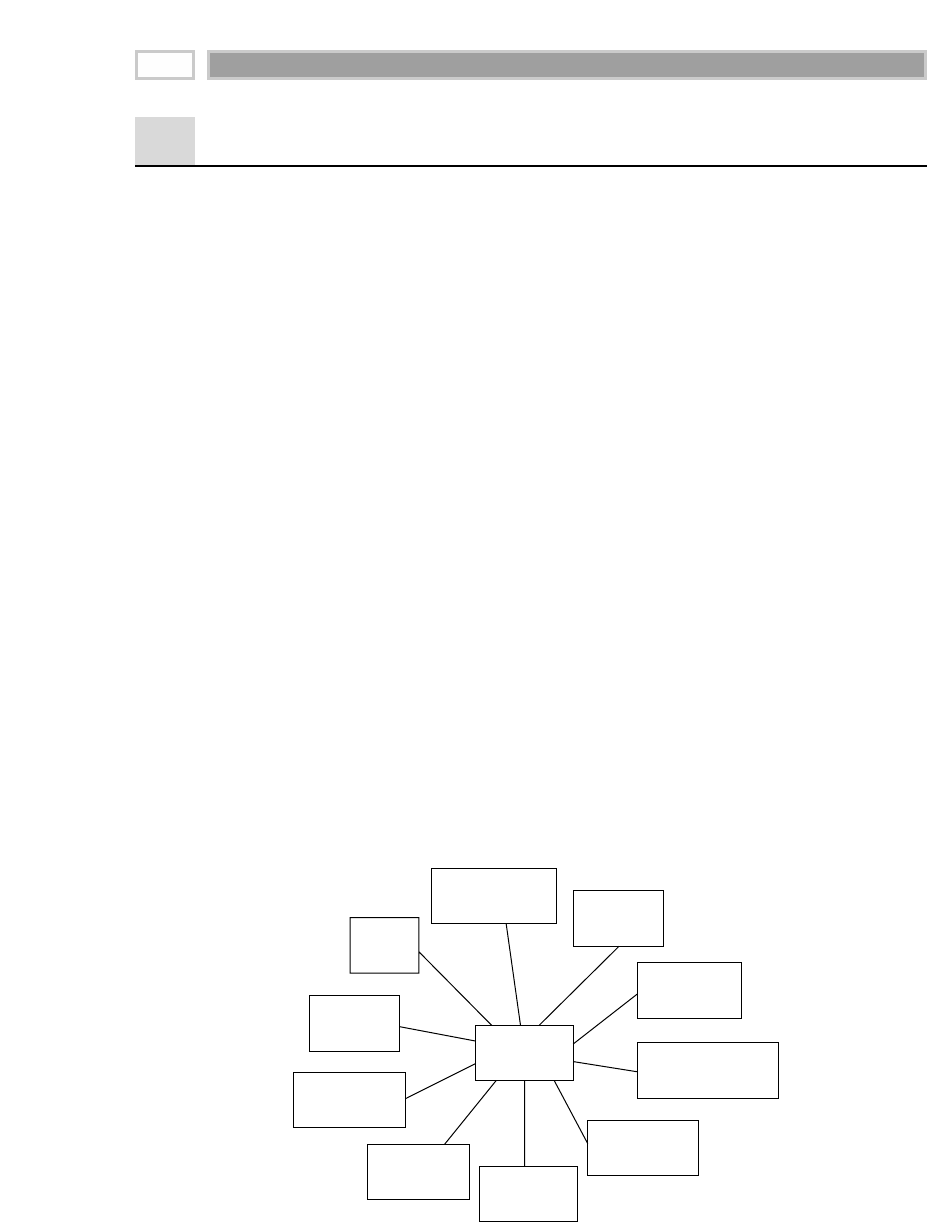

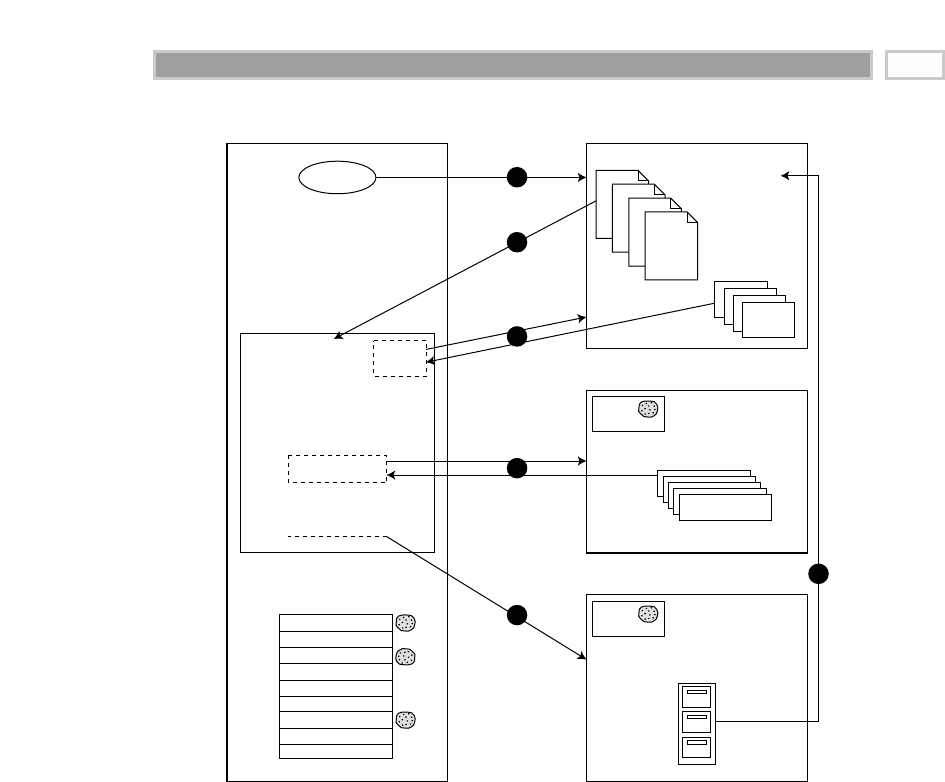

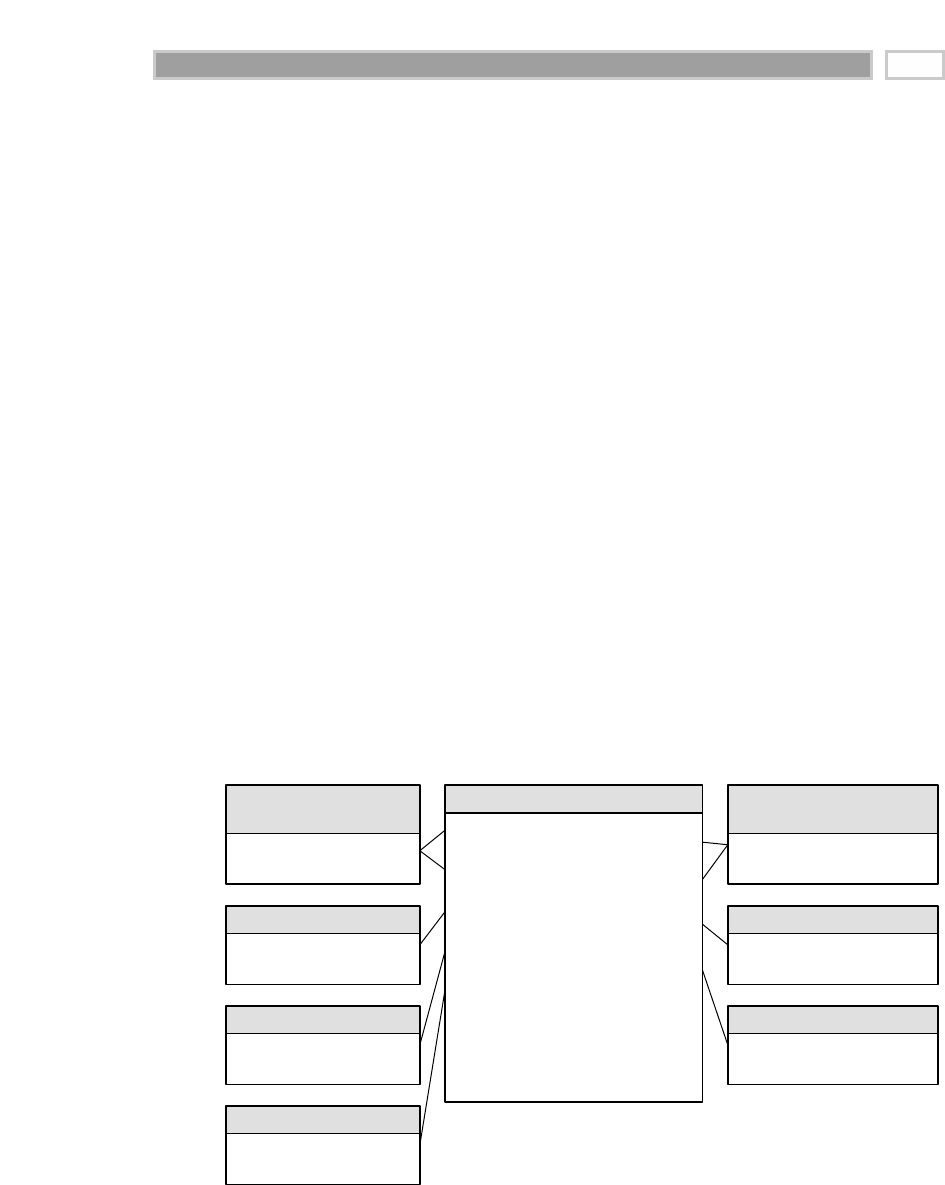

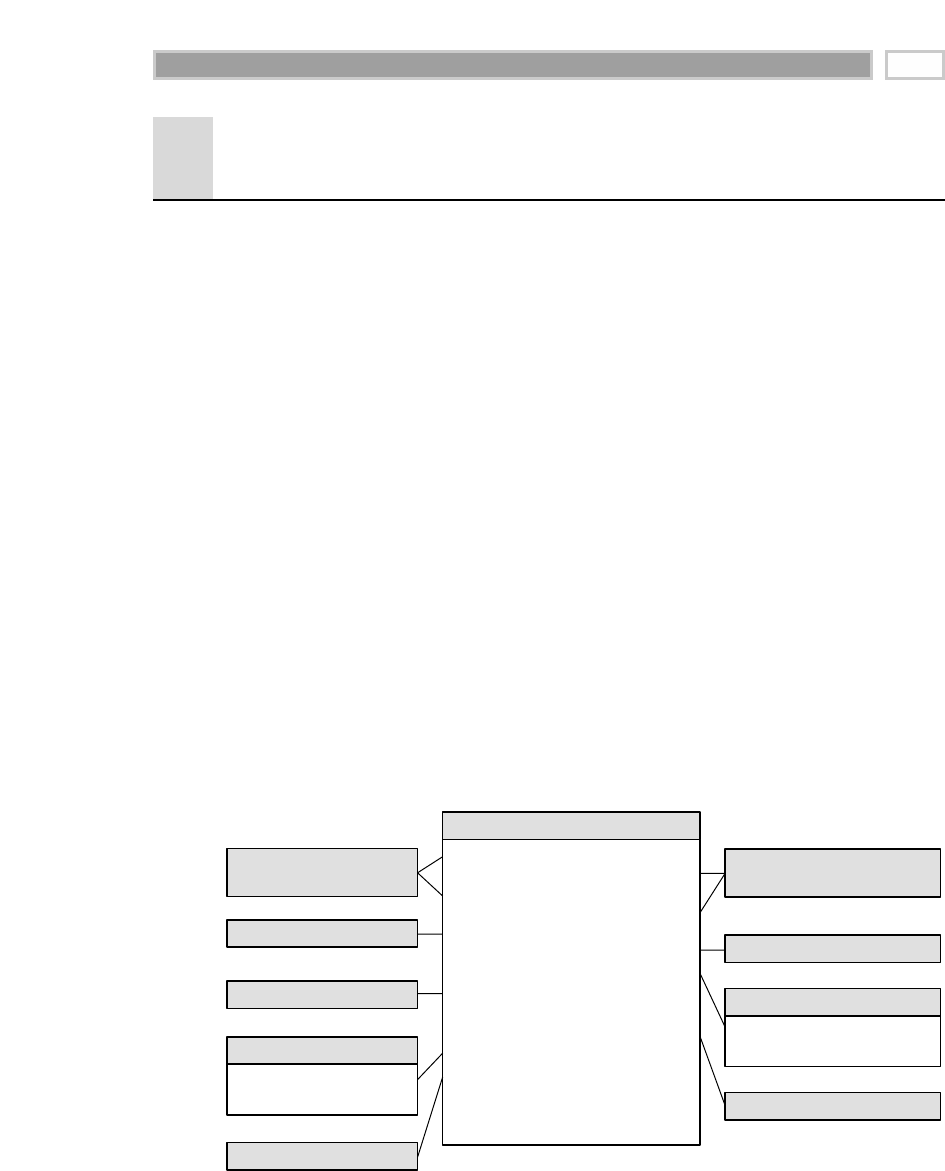

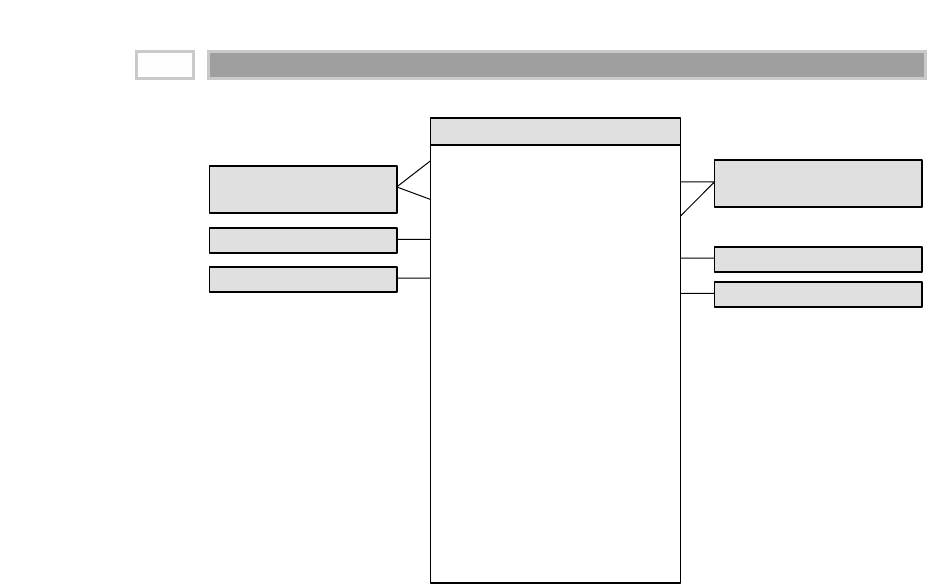

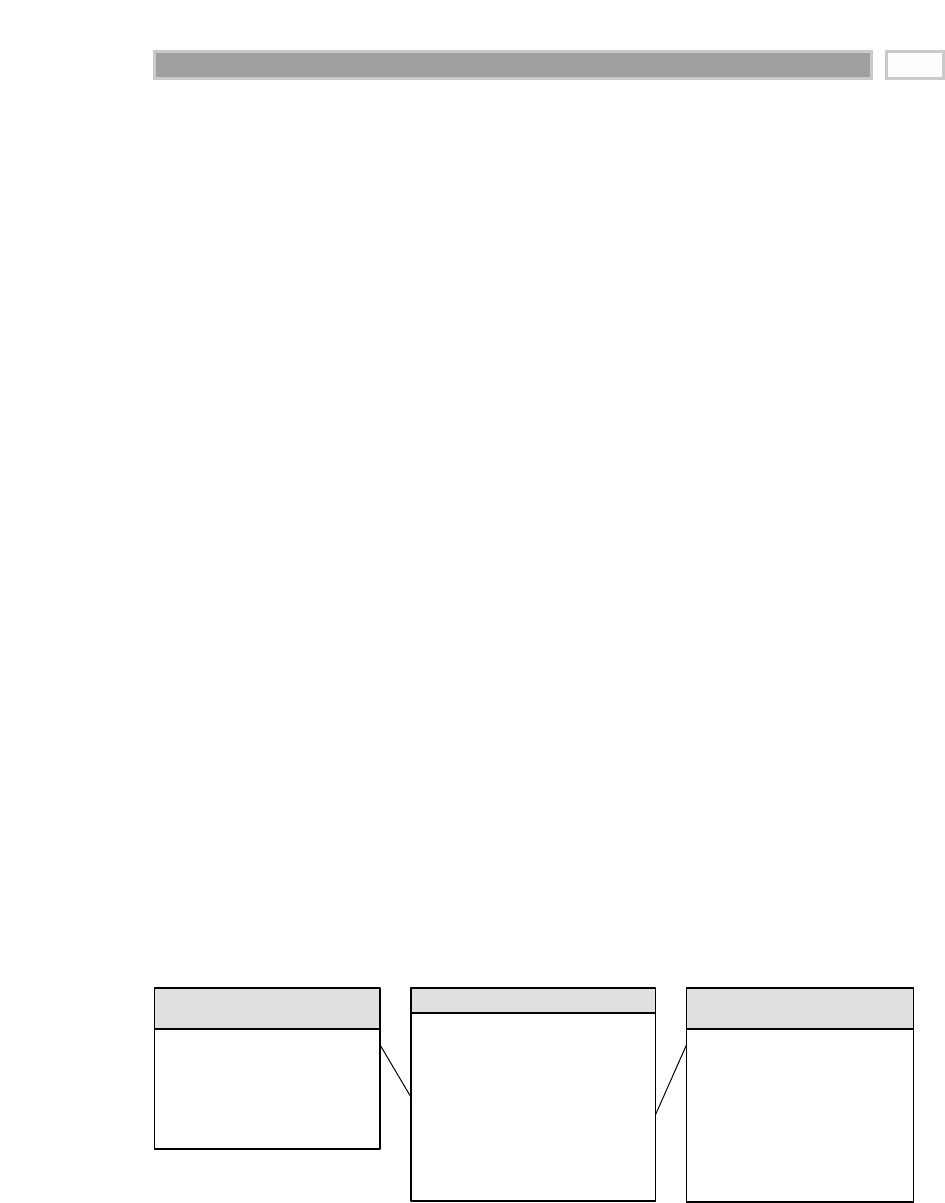

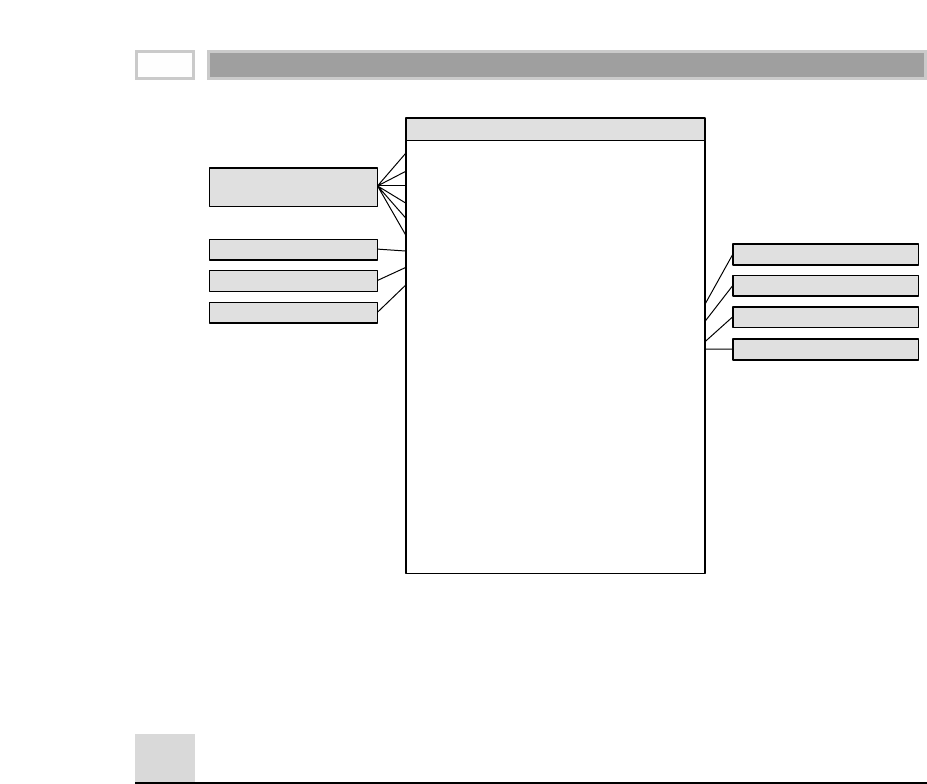

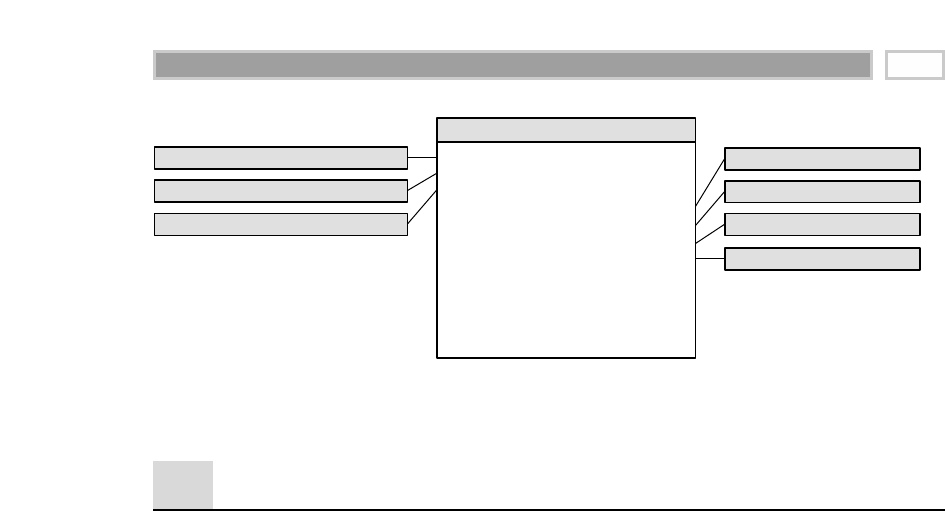

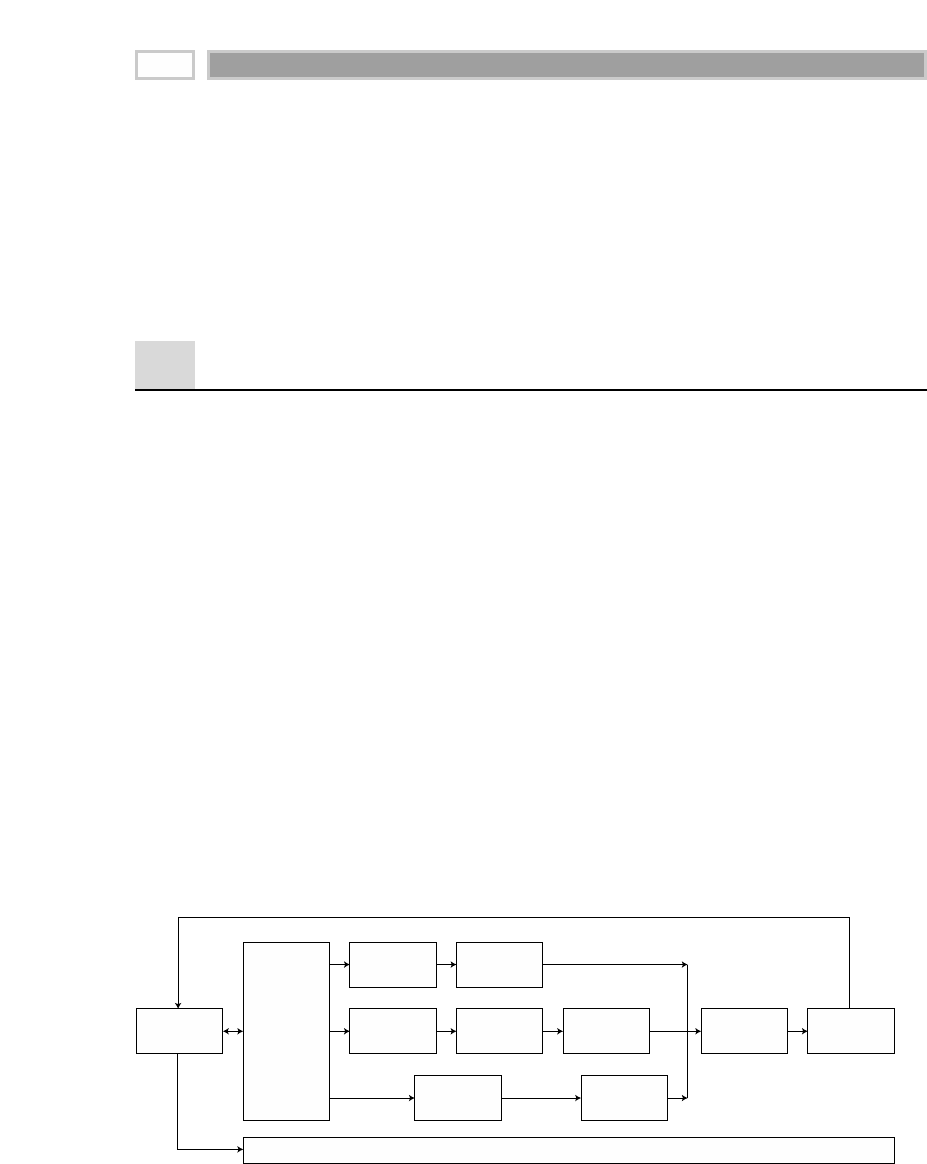

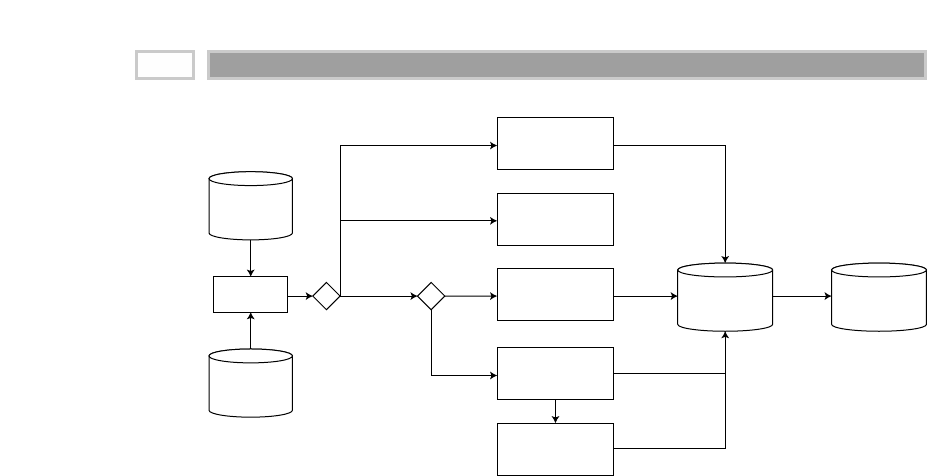

As illustrated in Figure 1.1, there are four separate and distinct components to

be considered as we explore the data warehouse environment—operational

source systems, data staging area, data presentation area, and data access tools.

Operational Source Systems

These are the operational systems of record that capture the transactions of the

business. The source systems should be thought of as outside the data ware-

house because presumably we have little to no control over the content and for-

mat of the data in these operational legacy systems. The main priorities of the

source systems are processing performance and availability. Queries against

source systems are narrow, one-record-at-a-time queries that are part of the nor-

mal transaction flow and severely restricted in their demands on the opera-

tional system. We make the strong assumption that source systems are not

queried in the broad and unexpected ways that data warehouses typically are

queried. The source systems maintain little historical data, and if you have a

good data warehouse, the source systems can be relieved of much of the

responsibility for representing the past. Each source system is often a natural

stovepipe application, where little investment has been made to sharing com-

mon data such as product, customer, geography, or calendar with other opera-

tional systems in the organization. It would be great if your source systems

were being reengineered with a consistent view. Such an enterprise application

integration (EAI) effort will make the data warehouse design task far easier.

Figure 1.1 Basic elements of the data warehouse.

Extract

Extract

Extract

Load

Load

Operational

Source

Systems

Data

Staging

Area

Services:

Clean, combine,

and standardize

Conform

dimensions

NO USER QUERY

SERVICES

Data Store:

Flat files and

relational tables

Processing:

Sorting and

sequential

processing

Access

Access

Ad Hoc Query Tools

Report Writers

Analytic

Applications

Modeling:

Forecasting

Scoring

Data mining

Data

Presentation

Area

Data

Access

Tools

Data Mart #1

DIMENSIONAL

Atomic and

summary data

Based on a single

business process

Data Mart #2 ...

(Similarly designed)

DW Bus:

Conformed

facts &

dimensions

Dimensional Modeling Primer 7

Data Staging Area

The data staging area of the data warehouse is both a storage area and a set of

processes commonly referred to as extract-transformation-load (ETL). The data

staging area is everything between the operational source systems and the

data presentation area. It is somewhat analogous to the kitchen of a restaurant,

where raw food products are transformed into a fine meal. In the data ware-

house, raw operational data is transformed into a warehouse deliverable fit for

user query and consumption. Similar to the restaurant’s kitchen, the backroom

data staging area is accessible only to skilled professionals. The data ware-

house kitchen staff is busy preparing meals and simultaneously cannot be

responding to customer inquiries. Customers aren’t invited to eat in the

kitchen. It certainly isn’t safe for customers to wander into the kitchen. We

wouldn’t want our data warehouse customers to be injured by the dangerous

equipment, hot surfaces, and sharp knifes they may encounter in the kitchen,

so we prohibit them from accessing the staging area. Besides, things happen in

the kitchen that customers just shouldn’t be privy to.

The key architectural requirement for the data staging area is that it is off-limits to

business users and does not provide query and presentation services.

Extraction is the first step in the process of getting data into the data ware-

house environment. Extracting means reading and understanding the source

data and copying the data needed for the data warehouse into the staging area

for further manipulation.

Once the data is extracted to the staging area, there are numerous potential

transformations, such as cleansing the data (correcting misspellings, resolving

domain conflicts, dealing with missing elements, or parsing into standard for-

mats), combining data from multiple sources, deduplicating data, and assign-

ing warehouse keys. These transformations are all precursors to loading the

data into the data warehouse presentation area.

Unfortunately, there is still considerable industry consternation about whether

the data that supports or results from this process should be instantiated in

physical normalized structures prior to loading into the presentation area for

querying and reporting. These normalized structures sometimes are referred

to in the industry as the enterprise data warehouse; however, we believe that this

terminology is a misnomer because the warehouse is actually much more

encompassing than this set of normalized tables. The enterprise’s data ware-

house more accurately refers to the conglomeration of an organization’s data

warehouse staging and presentation areas. Thus, throughout this book, when

we refer to the enterprise data warehouse, we mean the union of all the diverse

data warehouse components, not just the backroom staging area.

CHAPTER 1

8

The data staging area is dominated by the simple activities of sorting and

sequential processing. In many cases, the data staging area is not based on

relational technology but instead may consist of a system of flat files. After you

validate your data for conformance with the defined one-to-one and many-to-

one business rules, it may be pointless to take the final step of building a full-

blown third-normal-form physical database.

However, there are cases where the data arrives at the doorstep of the data

staging area in a third-normal-form relational format. In these situations, the

managers of the data staging area simply may be more comfortable perform-

ing the cleansing and transformation tasks using a set of normalized struc-

tures. A normalized database for data staging storage is acceptable. However,

we continue to have some reservations about this approach. The creation of

both normalized structures for staging and dimensional structures for presen-

tation means that the data is extracted, transformed, and loaded twice—once

into the normalized database and then again when we load the dimensional

model. Obviously, this two-step process requires more time and resources for

the development effort, more time for the periodic loading or updating of

data, and more capacity to store the multiple copies of the data. At the bottom

line, this typically translates into the need for larger development, ongoing

support, and hardware platform budgets. Unfortunately, some data ware-

house project teams have failed miserably because they focused all their

energy and resources on constructing the normalized structures rather than

allocating time to development of a presentation area that supports improved

business decision making. While we believe that enterprise-wide data consis-

tency is a fundamental goal of the data warehouse environment, there are

equally effective and less costly approaches than physically creating a normal-

ized set of tables in your staging area, if these structures don’t already exist.

It is acceptable to create a normalized database to support the staging processes;

however, this is not the end goal. The normalized structures must be off-limits to

user queries because they defeat understandability and performance. As soon as a

database supports query and presentation services, it must be considered part of the

data warehouse presentation area. By default, normalized databases are excluded

from the presentation area, which should be strictly dimensionally structured.

Regardless of whether we’re working with a series of flat files or a normalized

data structure in the staging area, the final step of the ETL process is the load-

ing of data. Loading in the data warehouse environment usually takes the

form of presenting the quality-assured dimensional tables to the bulk loading

facilities of each data mart. The target data mart must then index the newly

arrived data for query performance. When each data mart has been freshly

loaded, indexed, supplied with appropriate aggregates, and further quality

Dimensional Modeling Primer 9

assured, the user community is notified that the new data has been published.

Publishing includes communicating the nature of any changes that have

occurred in the underlying dimensions and new assumptions that have been

introduced into the measured or calculated facts.

Data Presentation

The data presentation area is where data is organized, stored, and made avail-

able for direct querying by users, report writers, and other analytical applica-

tions. Since the backroom staging area is off-limits, the presentation area is the

data warehouse as far as the business community is concerned. It is all the

business community sees and touches via data access tools. The prerelease

working title for the first edition of The Data Warehouse Toolkit originally was

Getting the Data Out. This is what the presentation area with its dimensional

models is all about.

We typically refer to the presentation area as a series of integrated data marts.

A data mart is a wedge of the overall presentation area pie. In its most sim-

plistic form, a data mart presents the data from a single business process.

These business processes cross the boundaries of organizational functions.

We have several strong opinions about the presentation area. First of all, we

insist that the data be presented, stored, and accessed in dimensional schemas.

Fortunately, the industry has matured to the point where we’re no longer

debating this mandate. The industry has concluded that dimensional model-

ing is the most viable technique for delivering data to data warehouse users.

Dimensional modeling is a new name for an old technique for making data-

bases simple and understandable. In case after case, beginning in the 1970s, IT

organizations, consultants, end users, and vendors have gravitated to a simple

dimensional structure to match the fundamental human need for simplicity.

Imagine a chief executive officer (CEO) who describes his or her business as,

“We sell products in various markets and measure our performance over

time.” As dimensional designers, we listen carefully to the CEO’s emphasis on

product, market, and time. Most people find it intuitive to think of this busi-

ness as a cube of data, with the edges labeled product, market, and time. We

can imagine slicing and dicing along each of these dimensions. Points inside

the cube are where the measurements for that combination of product, market,

and time are stored. The ability to visualize something as abstract as a set of

data in a concrete and tangible way is the secret of understandability. If this

perspective seems too simple, then good! A data model that starts by being

simple has a chance of remaining simple at the end of the design. A model that

starts by being complicated surely will be overly complicated at the end.

Overly complicated models will run slowly and be rejected by business users.

CHAPTER 1

10

Dimensional modeling is quite different from third-normal-form (3NF) mod-

eling. 3NF modeling is a design technique that seeks to remove data redun-

dancies. Data is divided into many discrete entities, each of which becomes a

table in the relational database. A database of sales orders might start off with

a record for each order line but turns into an amazingly complex spiderweb

diagram as a 3NF model, perhaps consisting of hundreds or even thousands of

normalized tables.

The industry sometimes refers to 3NF models as ER models. ER is an acronym

for entity relationship. Entity-relationship diagrams (ER diagrams or ERDs) are

drawings of boxes and lines to communicate the relationships between tables.

Both 3NF and dimensional models can be represented in ERDs because both

consist of joined relational tables; the key difference between 3NF and dimen-

sional models is the degree of normalization. Since both model types can be

presented as ERDs, we’ll refrain from referring to 3NF models as ER models;

instead, we’ll call them normalized models to minimize confusion.

Normalized modeling is immensely helpful to operational processing perfor-

mance because an update or insert transaction only needs to touch the data-

base in one place. Normalized models, however, are too complicated for data

warehouse queries. Users can’t understand, navigate, or remember normal-

ized models that resemble the Los Angeles freeway system. Likewise, rela-

tional database management systems (RDBMSs) can’t query a normalized

model efficiently; the complexity overwhelms the database optimizers, result-

ing in disastrous performance. The use of normalized modeling in the data

warehouse presentation area defeats the whole purpose of data warehousing,

namely, intuitive and high-performance retrieval of data.

There is a common syndrome in many large IT shops. It is a kind of sickness

that comes from overly complex data warehousing schemas. The symptoms

might include:

■■ A $10 million hardware and software investment that is performing only a

handful of queries per day

■■ An IT department that is forced into a kind of priesthood, writing all the

data warehouse queries

■■ Seemingly simple queries that require several pages of single-spaced

Structured Query Language (SQL) code

■■ A marketing department that is unhappy because it can’t access the sys-

tem directly (and still doesn’t know whether the company is profitable in

Schenectady)

■■ A restless chief information officer (CIO) who is determined to make some

changes if things don’t improve dramatically

Dimensional Modeling Primer 11

Fortunately, dimensional modeling addresses the problem of overly complex

schemas in the presentation area. A dimensional model contains the same infor-

mation as a normalized model but packages the data in a format whose design

goals are user understandability, query performance, and resilience to change.

Our second stake in the ground about presentation area data marts is that they

must contain detailed, atomic data. Atomic data is required to withstand

assaults from unpredictable ad hoc user queries. While the data marts also

may contain performance-enhancing summary data, or aggregates, it is not

sufficient to deliver these summaries without the underlying granular data in

a dimensional form. In other words, it is completely unacceptable to store only

summary data in dimensional models while the atomic data is locked up in

normalized models. It is impractical to expect a user to drill down through

dimensional data almost to the most granular level and then lose the benefits

of a dimensional presentation at the final step. In Chapter 16 we will see that

any user application can descend effortlessly to the bedrock granular data by

using aggregate navigation, but only if all the data is available in the same,

consistent dimensional form. While users of the data warehouse may look

infrequently at a single line item on an order, they may be very interested in

last week’s orders for products of a given size (or flavor, package type, or man-

ufacturer) for customers who first purchased within the last six months (or

reside in a given state or have certain credit terms). We need the most finely

grained data in our presentation area so that users can ask the most precise

questions possible. Because users’ requirements are unpredictable and con-

stantly changing, we must provide access to the exquisite details so that they

can be rolled up to address the questions of the moment.

All the data marts must be built using common dimensions and facts, which

we refer to as conformed. This is the basis of the data warehouse bus architec-

ture, which we’ll elaborate on in Chapter 3. Adherence to the bus architecture

is our third stake in the ground regarding the presentation area. Without

shared, conformed dimensions and facts, a data mart is a standalone stovepipe

application. Isolated stovepipe data marts that cannot be tied together are the

bane of the data warehouse movement. They merely perpetuate incompatible

views of the enterprise. If you have any hope of building a data warehouse

that is robust and integrated, you must make a commitment to the bus archi-

tecture. In this book we will illustrate that when data marts have been

designed with conformed dimensions and facts, they can be combined and

used together. The data warehouse presentation area in a large enterprise data

warehouse ultimately will consist of 20 or more very similar-looking data

marts. The dimensional models in these data marts also will look quite similar.

Each data mart may contain several fact tables, each with 5 to 15 dimension

tables. If the design has been done correctly, many of these dimension tables

will be shared from fact table to fact table.

CHAPTER 1

12

Using the bus architecture is the secret to building distributed data warehouse

systems. Let’s be real—most of us don’t have the budget, time, or political

power to build a fully centralized data warehouse. When the bus architecture

is used as a framework, we can allow the enterprise data warehouse to

develop in a decentralized (and far more realistic) way.

Data in the queryable presentation area of the data warehouse must be dimen-

sional, must be atomic, and must adhere to the data warehouse bus architecture.

If the presentation area is based on a relational database, then these dimen-

sionally modeled tables are referred to as star schemas. If the presentation area

is based on multidimensional database or online analytic processing (OLAP)

technology, then the data is stored in cubes. While the technology originally

wasn’t referred to as OLAP, many of the early decision support system ven-

dors built their systems around the cube concept, so today’s OLAP vendors

naturally are aligned with the dimensional approach to data warehousing.

Dimensional modeling is applicable to both relational and multidimensional

databases. Both have a common logical design with recognizable dimensions;

however, the physical implementation differs. Fortunately, most of the recom-

mendations in this book pertain, regardless of the database platform. While

the capabilities of OLAP technology are improving continuously, at the time of

this writing, most large data marts are still implemented on relational data-

bases. In addition, most OLAP cubes are sourced from or drill into relational

dimensional star schemas using a variation of aggregate navigation. For these

reasons, most of the specific discussions surrounding the presentation area are

couched in terms of a relational platform.

Contrary to the original religion of the data warehouse, modern data marts

may well be updated, sometimes frequently. Incorrect data obviously should

be corrected. Changes in labels, hierarchies, status, and corporate ownership

often trigger necessary changes in the original data stored in the data marts

that comprise the data warehouse, but in general, these are managed-load

updates, not transactional updates.

Data Access Tools

The final major component of the data warehouse environment is the data

access tool(s). We use the term tool loosely to refer to the variety of capabilities

that can be provided to business users to leverage the presentation area for

analytic decision making. By definition, all data access tools query the data in

the data warehouse’s presentation area. Querying, obviously, is the whole

point of using the data warehouse.

Dimensional Modeling Primer 13

A data access tool can be as simple as an ad hoc query tool or as complex as a

sophisticated data mining or modeling application. Ad hoc query tools, as

powerful as they are, can be understood and used effectively only by a small

percentage of the potential data warehouse business user population. The

majority of the business user base likely will access the data via prebuilt

parameter-driven analytic applications. Approximately 80 to 90 percent of the

potential users will be served by these canned applications that are essentially

finished templates that do not require users to construct relational queries

directly. Some of the more sophisticated data access tools, like modeling or

forecasting tools, actually may upload their results back into operational

source systems or the staging/presentation areas of the data warehouse.

Additional Considerations

Before we leave the discussion of data warehouse components, there are sev-

eral other concepts that warrant discussion.

Metadata

Metadata is all the information in the data warehouse environment that is not

the actual data itself. Metadata is akin to an encyclopedia for the data ware-

house. Data warehouse teams often spend an enormous amount of time talk-

ing about, worrying about, and feeling guilty about metadata. Since most

developers have a natural aversion to the development and orderly filing of

documentation, metadata often gets cut from the project plan despite every-

one’s acknowledgment that it is important.

Metadata comes in a variety of shapes and forms to support the disparate

needs of the data warehouse’s technical, administrative, and business user

groups. We have operational source system metadata including source

schemas and copybooks that facilitate the extraction process. Once data is in

the staging area, we encounter staging metadata to guide the transformation

and loading processes, including staging file and target table layouts, trans-

formation and cleansing rules, conformed dimension and fact definitions,

aggregation definitions, and ETL transmission schedules and run-log results.

Even the custom programming code we write in the data staging area is meta-

data.

Metadata surrounding the warehouse DBMS accounts for such items as the

system tables, partition settings, indexes, view definitions, and DBMS-level

security privileges and grants. Finally, the data access tool metadata identifies

business names and definitions for the presentation area’s tables and columns

as well as constraint filters, application template specifications, access and

usage statistics, and other user documentation. And of course, if we haven’t

CHAPTER 1

14

included it already, don’t forget all the security settings, beginning with source

transactional data and extending all the way to the user’s desktop.

The ultimate goal is to corral, catalog, integrate, and then leverage these dis-

parate varieties of metadata, much like the resources of a library. Suddenly, the

effort to build dimensional models appears to pale in comparison. However,

just because the task looms large, we can’t simply ignore the development of a

metadata framework for the data warehouse. We need to develop an overall

metadata plan while prioritizing short-term deliverables, including the pur-

chase or construction of a repository for keeping track of all the metadata.

Operational Data Store

Some of you probably are wondering where the operational data store (ODS)

fits in our warehouse components diagram. Since there’s no single universal

definition for the ODS, if and where it belongs depend on your situation. ODSs

are frequently updated, somewhat integrated copies of operational data. The

frequency of update and degree of integration of an ODS vary based on the

specific requirements. In any case, the O is the operative letter in the ODS

acronym.

Most commonly, an ODS is implemented to deliver operational reporting,

especially when neither the legacy nor more modern on-line transaction pro-

cessing (OLTP) systems provide adequate operational reports. These reports

are characterized by a limited set of fixed queries that can be hard-wired in a

reporting application. The reports address the organization’s more tactical

decision-making requirements. Performance-enhancing aggregations, signifi-

cant historical time series, and extensive descriptive attribution are specifically

excluded from the ODS. The ODS as a reporting instance may be a stepping-

stone to feed operational data into the warehouse.

In other cases, ODSs are built to support real-time interactions, especially in cus-

tomer relationship management (CRM) applications such as accessing your

travel itinerary on a Web site or your service history when you call into customer

support. The traditional data warehouse typically is not in a position to support

the demand for near-real-time data or immediate response times. Similar to the

operational reporting scenario, data inquiries to support these real-time interac-

tions have a fixed structure. Interestingly, this type of ODS sometimes leverages

information from the data warehouse, such as a customer service call center

application that uses customer behavioral information from the data warehouse

to precalculate propensity scores and store them in the ODS.

In either scenario, the ODS can be either a third physical system sitting between

the operational systems and the data warehouse or a specially administered hot

partition of the data warehouse itself. Every organization obviously needs

Dimensional Modeling Primer 15

operational systems. Likewise, every organization would benefit from a data

warehouse. The same cannot be said about a physically distinct ODS unless the

other two systems cannot answer your immediate operational questions.

Clearly, you shouldn’t allocate resources to construct a third physical system

unless your business needs cannot be supported by either the operational data-

collection system or the data warehouse. For these reasons, we believe that the

trend in data warehouse design is to deliver the ODS as a specially adminis-

tered portion of the conventional data warehouse. We will further discuss hot-

partition-style ODSs in Chapter 5.

Finally, before we leave this topic, some have defined the ODS to mean the

place in the data warehouse where we store granular atomic data. We believe

that this detailed data should be considered a natural part of the data ware-

house’s presentation area and not a separate entity. Beginning in Chapter 2, we

will show how the lowest-level transactions in a business are the foundation

for the presentation area of the data warehouse.

Dimensional Modeling Vocabulary

Throughout this book we will refer repeatedly to fact and dimension tables.

Contrary to popular folklore, Ralph Kimball didn’t invent this terminology. As

best as we can determine, the terms dimensions and facts originated from a joint

research project conducted by General Mills and Dartmouth University in the

1960s. In the 1970s, both AC Nielsen and IRI used these terms consistently to

describe their syndicated data offerings, which could be described accurately

today as dimensional data marts for retail sales data. Long before simplicity

was a lifestyle trend, the early database syndicators gravitated to these con-

cepts for simplifying the presentation of analytic information. They under-

stood that a database wouldn’t be used unless it was packaged simply.

It is probably accurate to say that a single person did not invent the dimensional ap-

proach. It is an irresistible force in the design of databases that will always result

when the designer places understandability and performance as the highest goals.

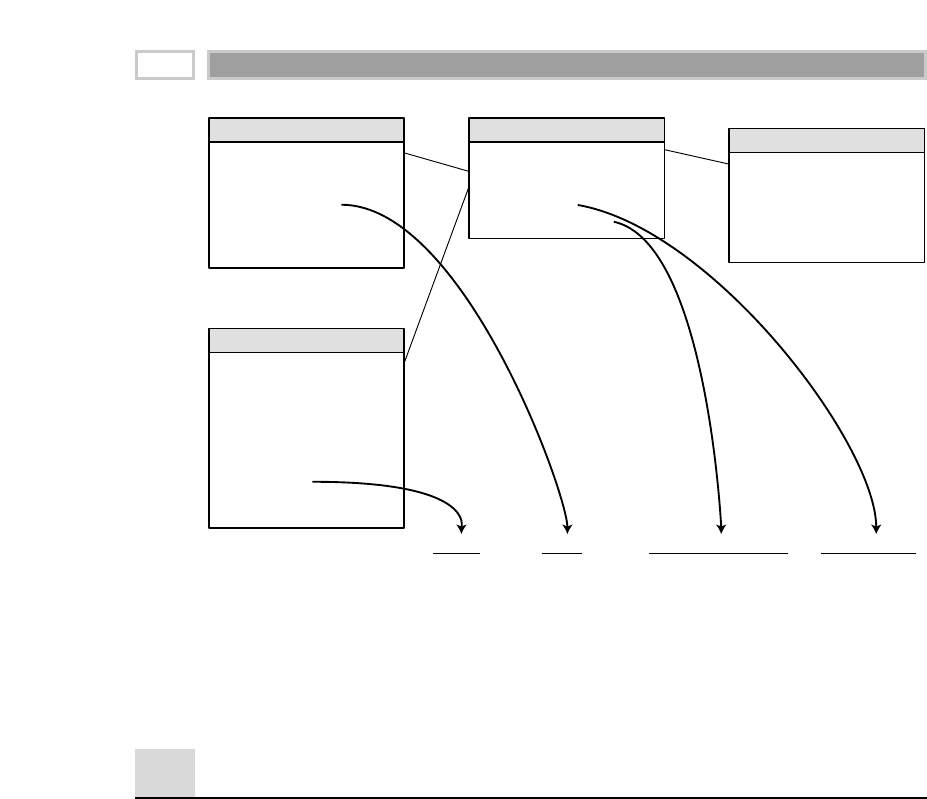

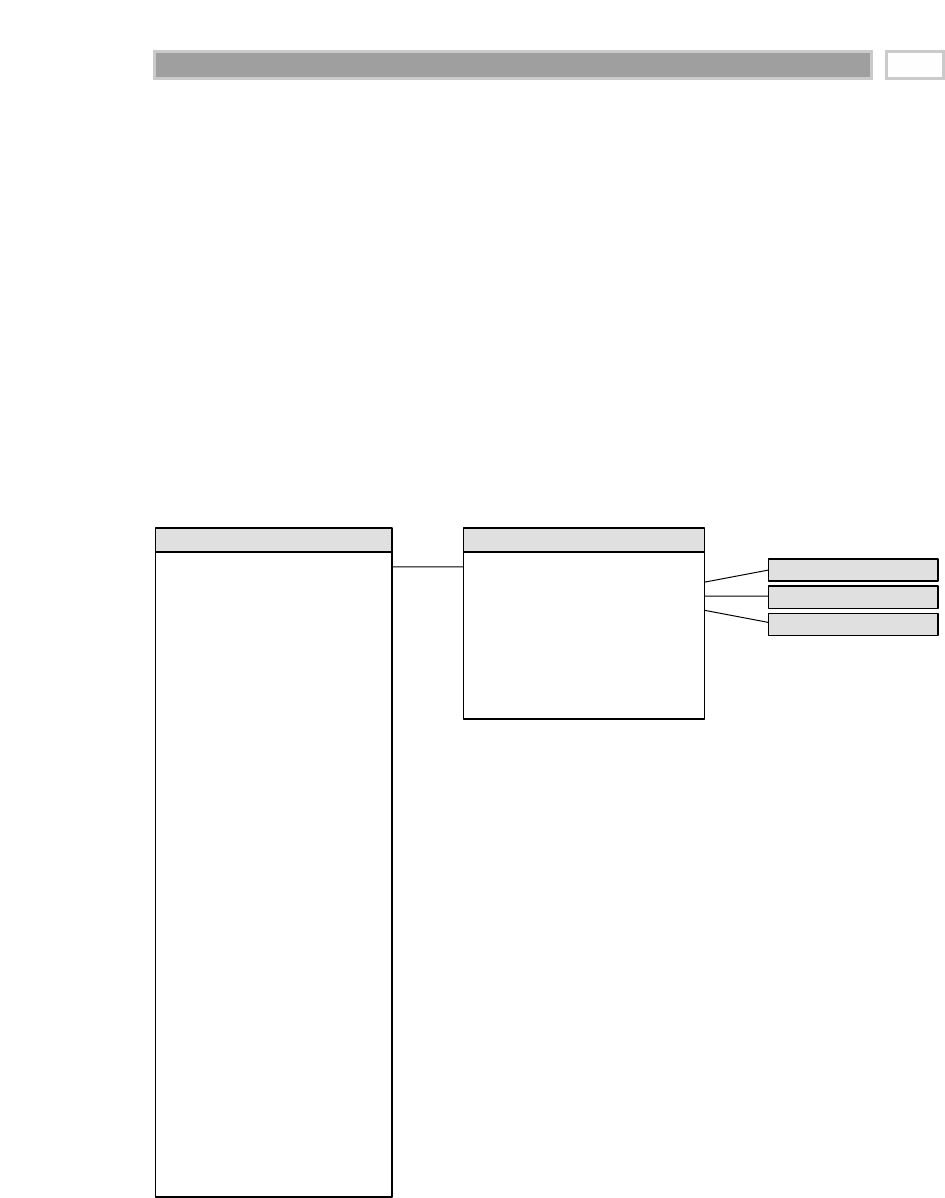

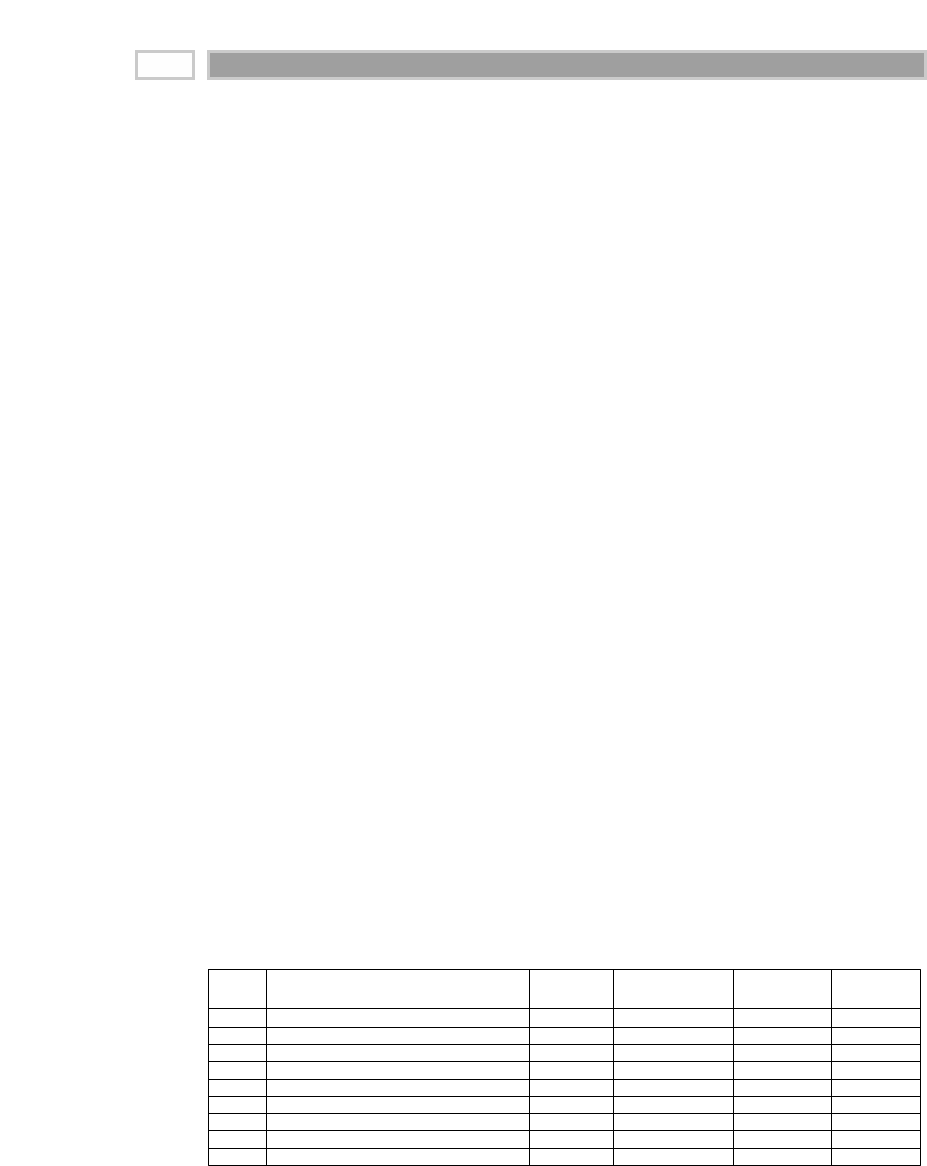

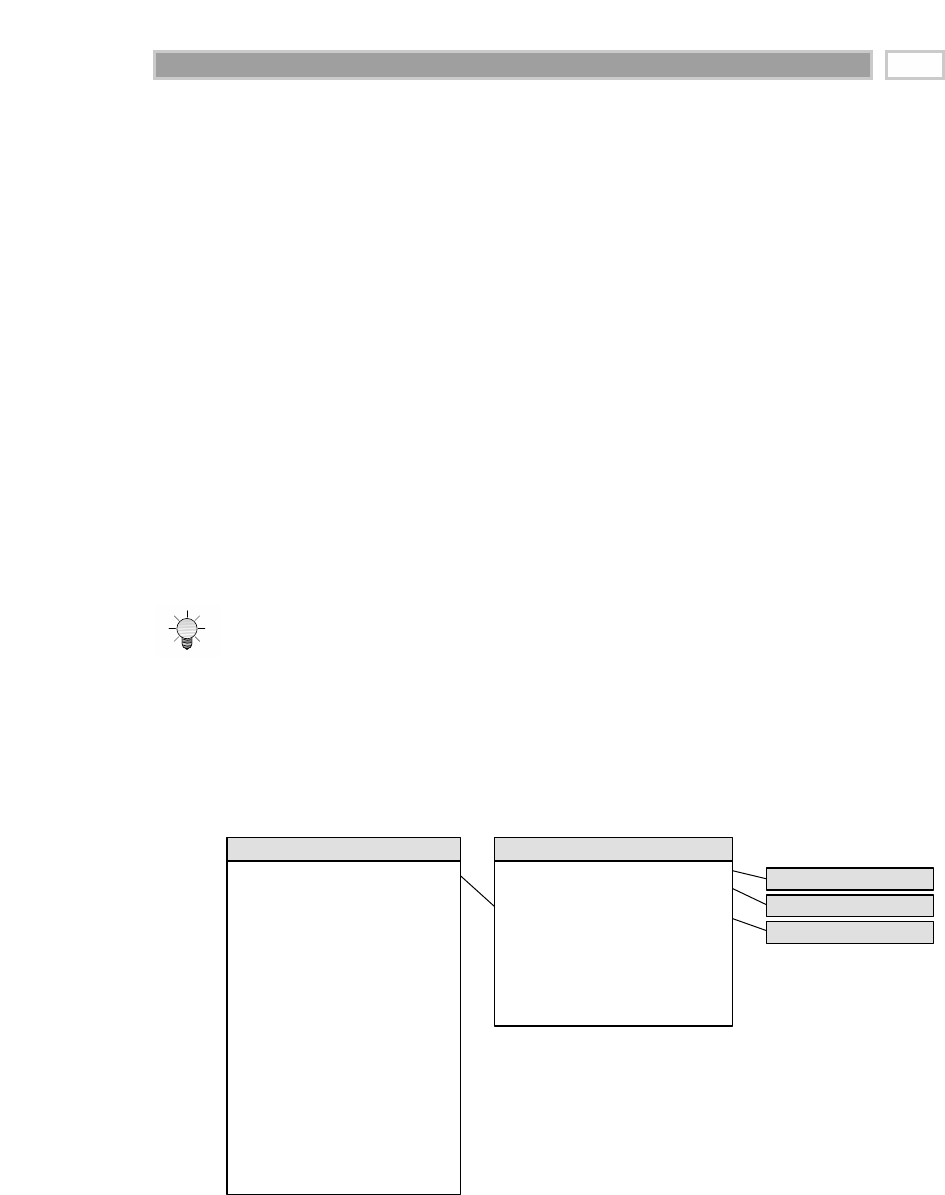

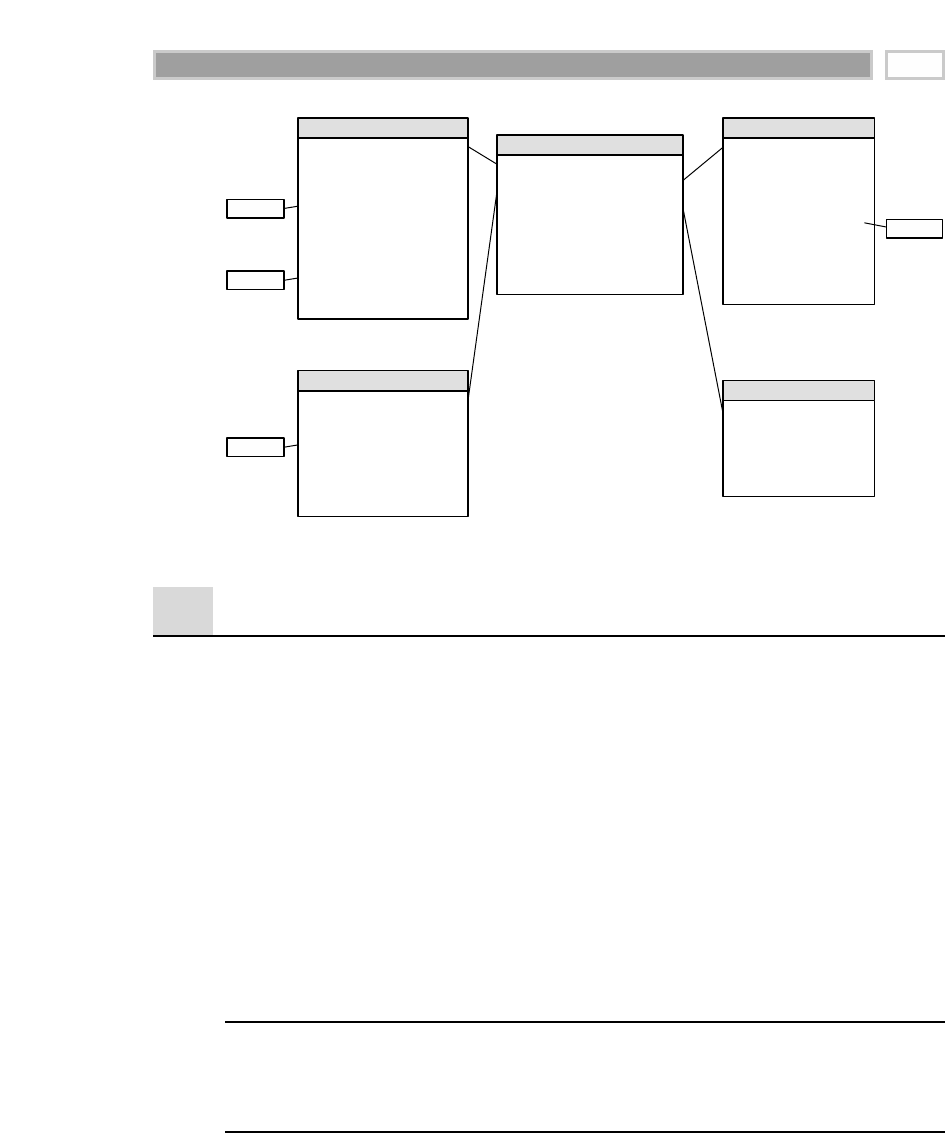

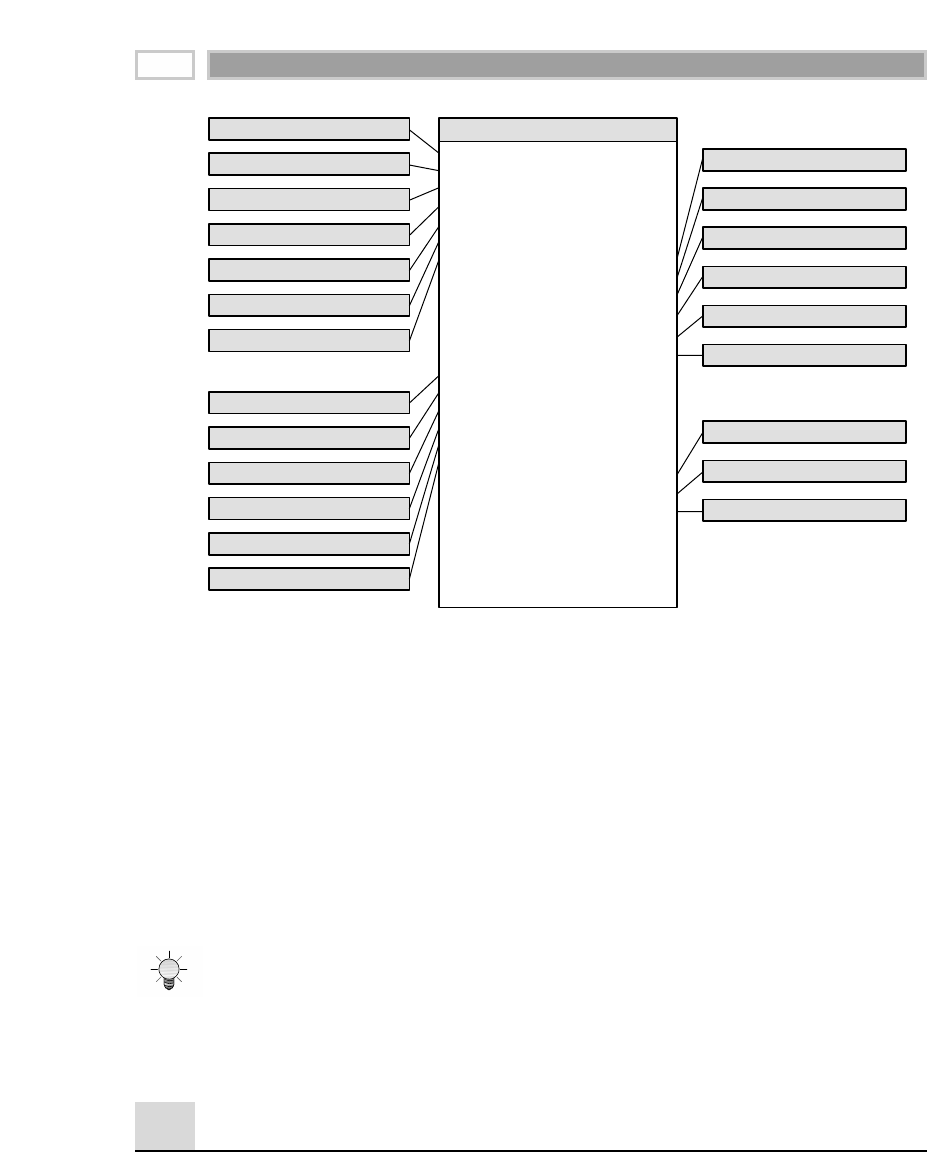

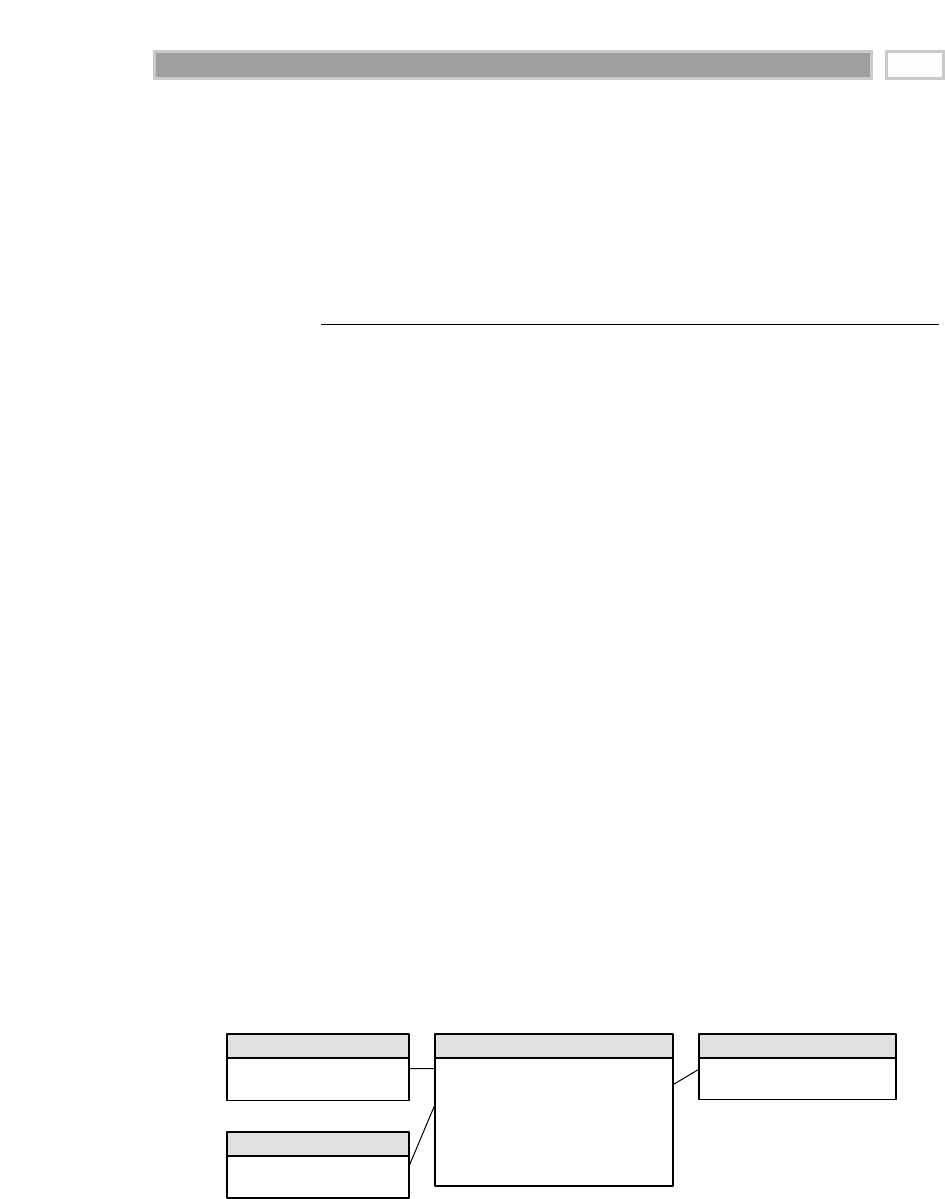

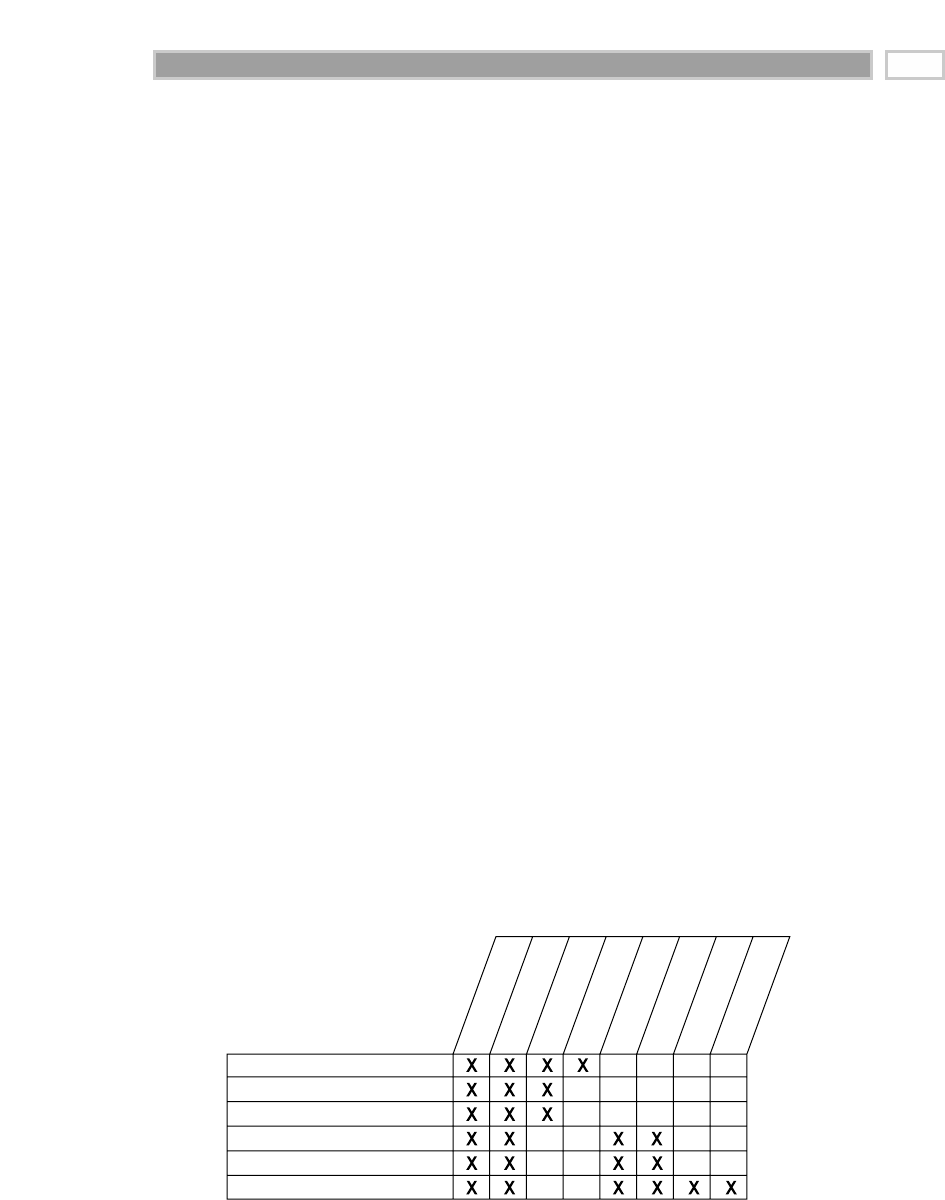

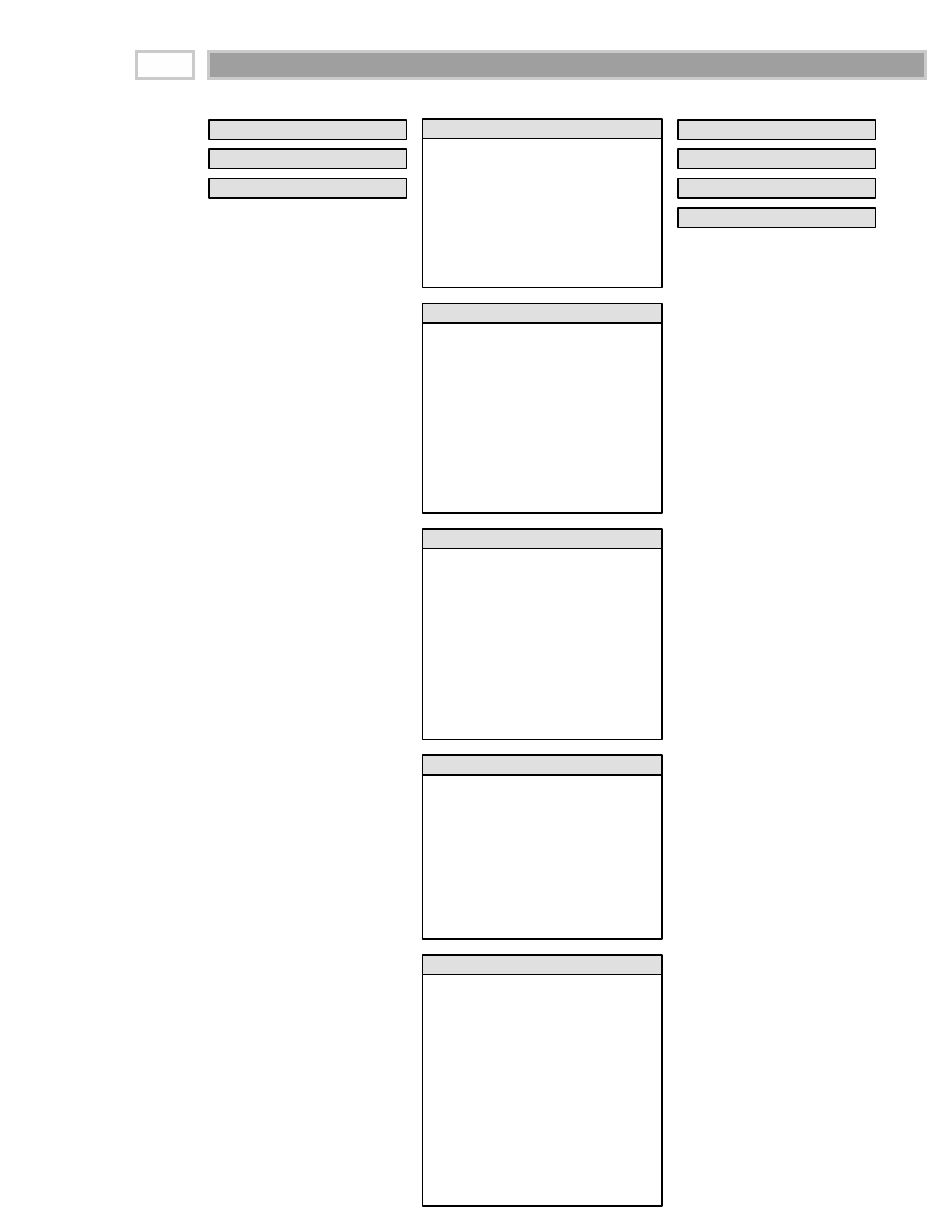

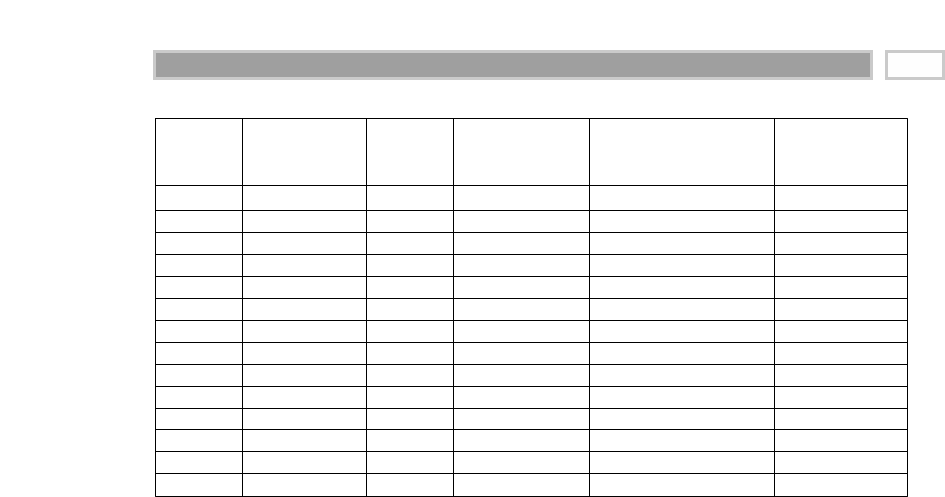

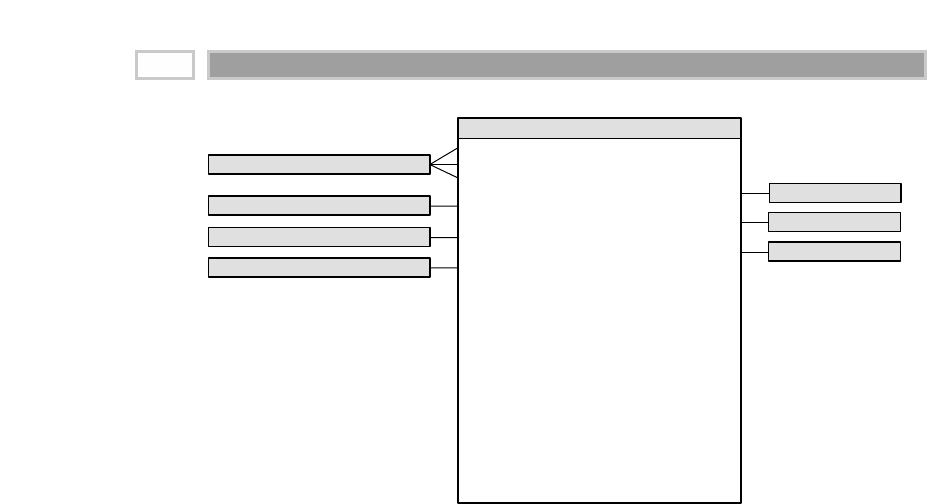

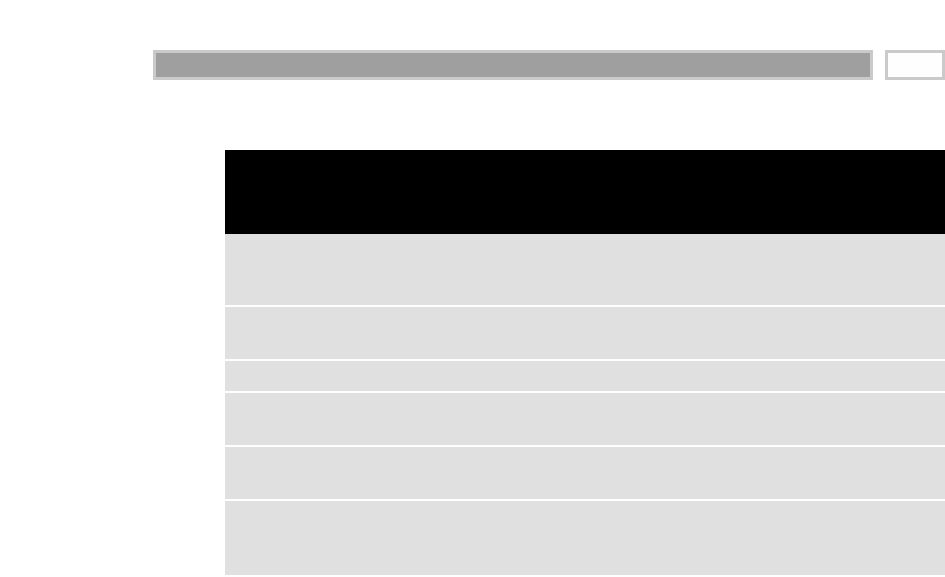

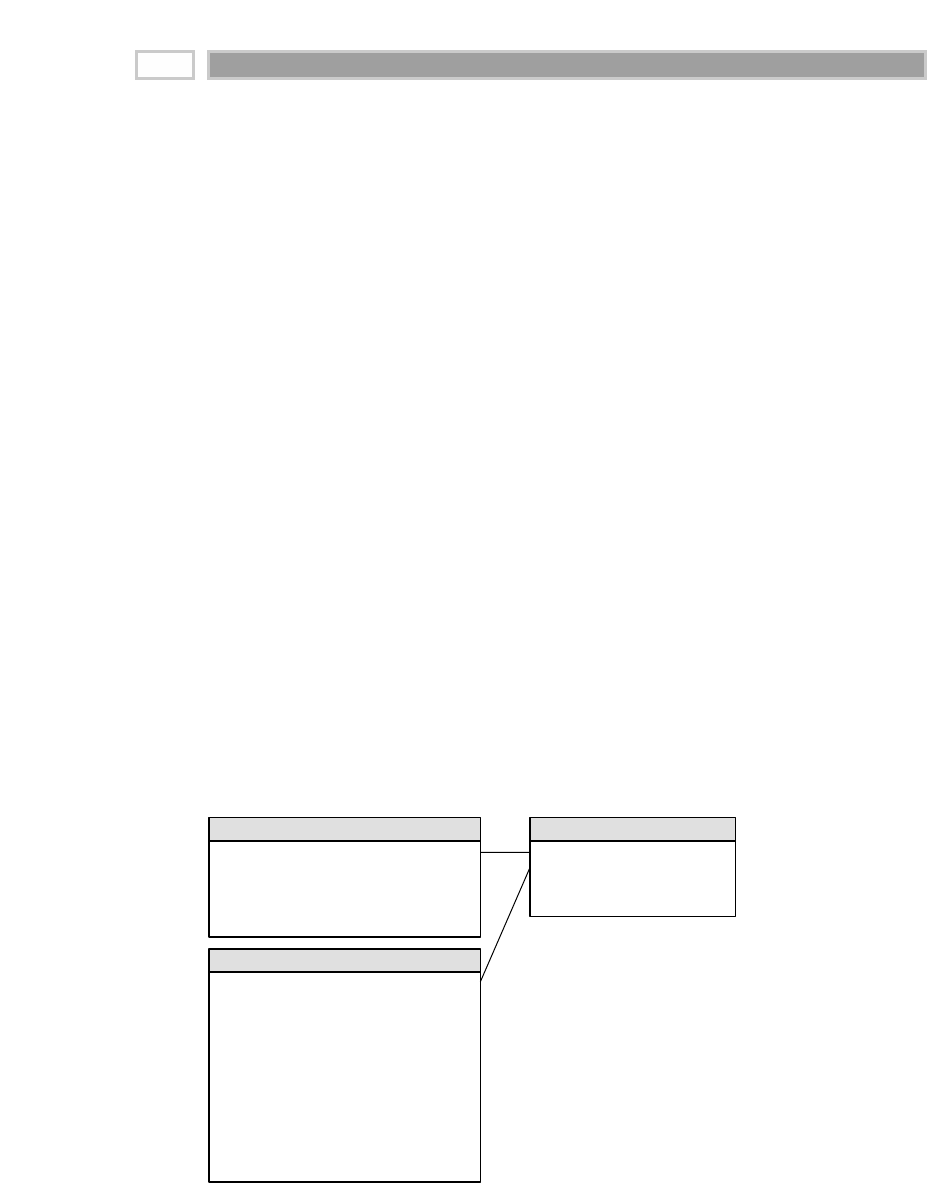

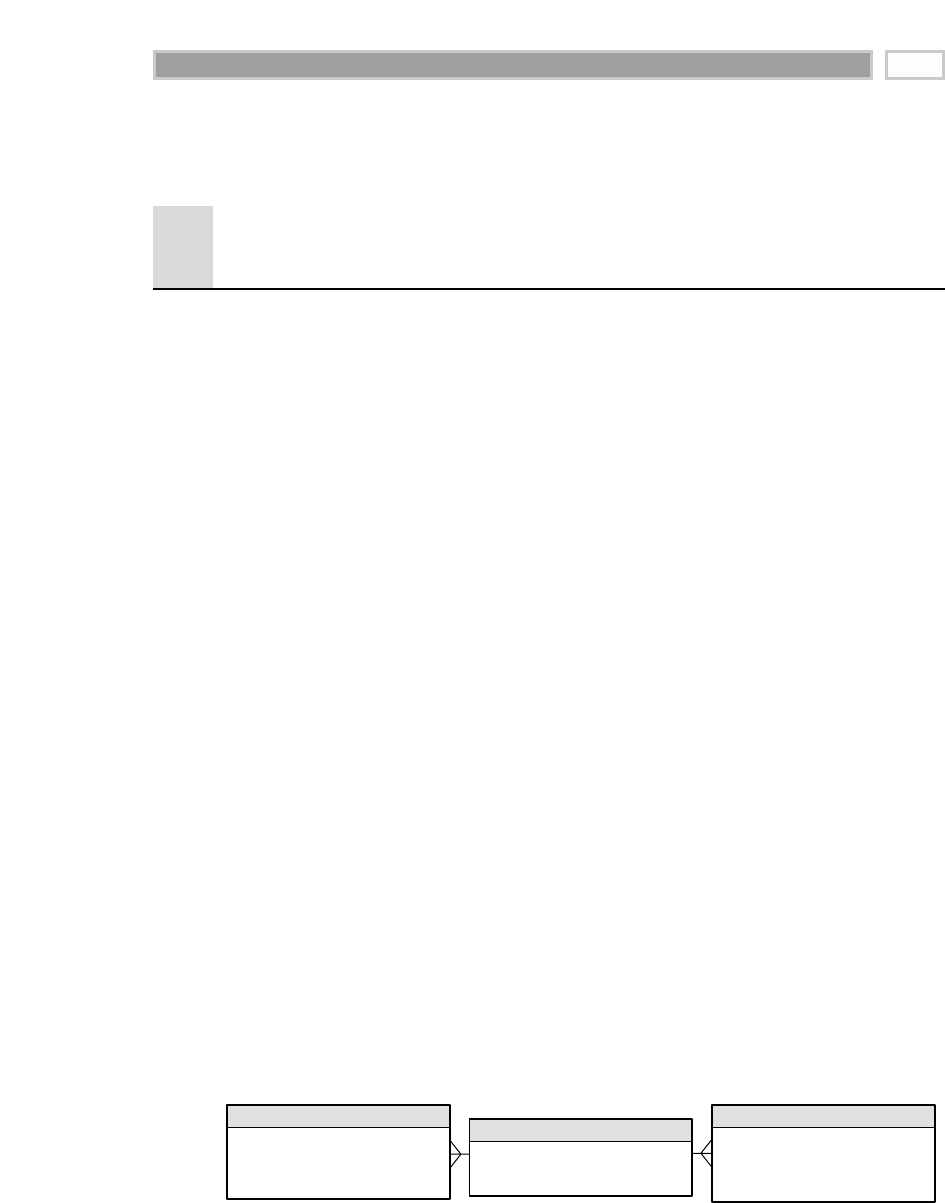

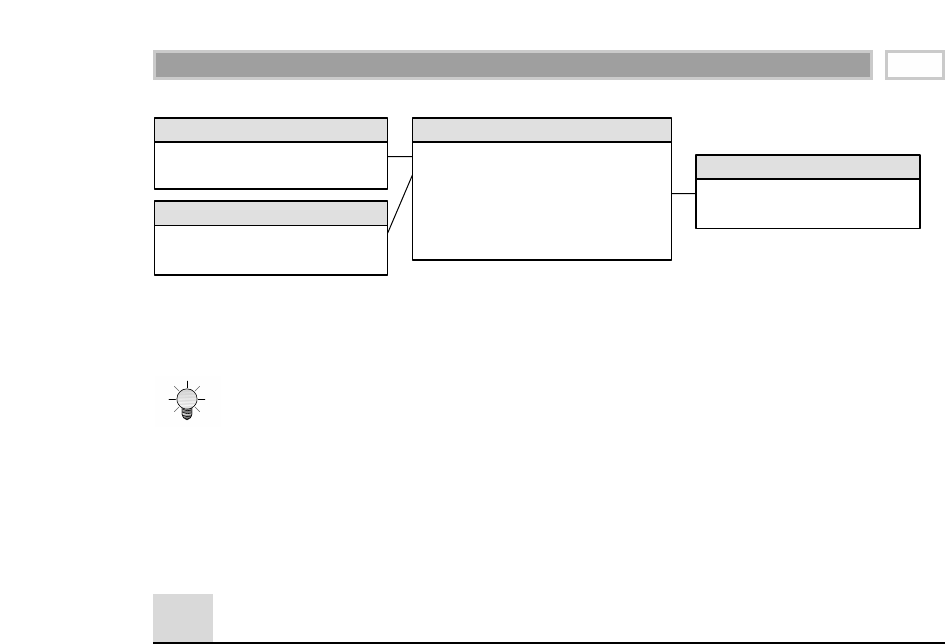

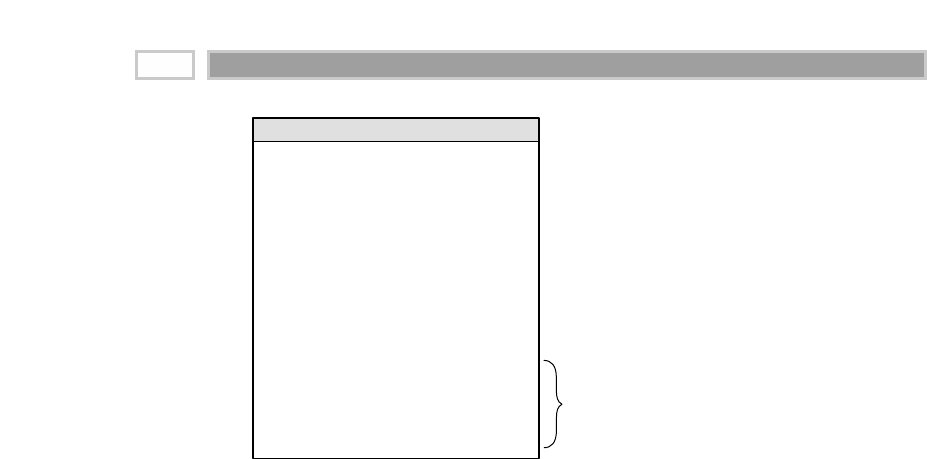

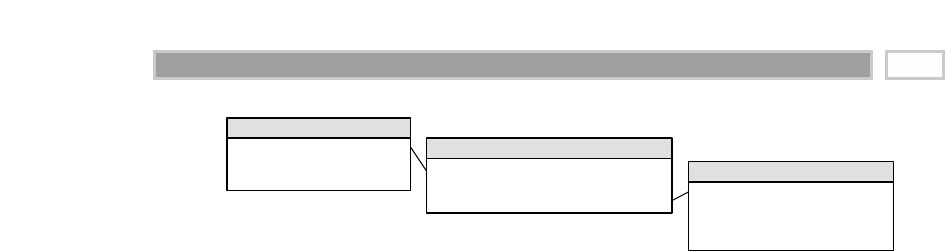

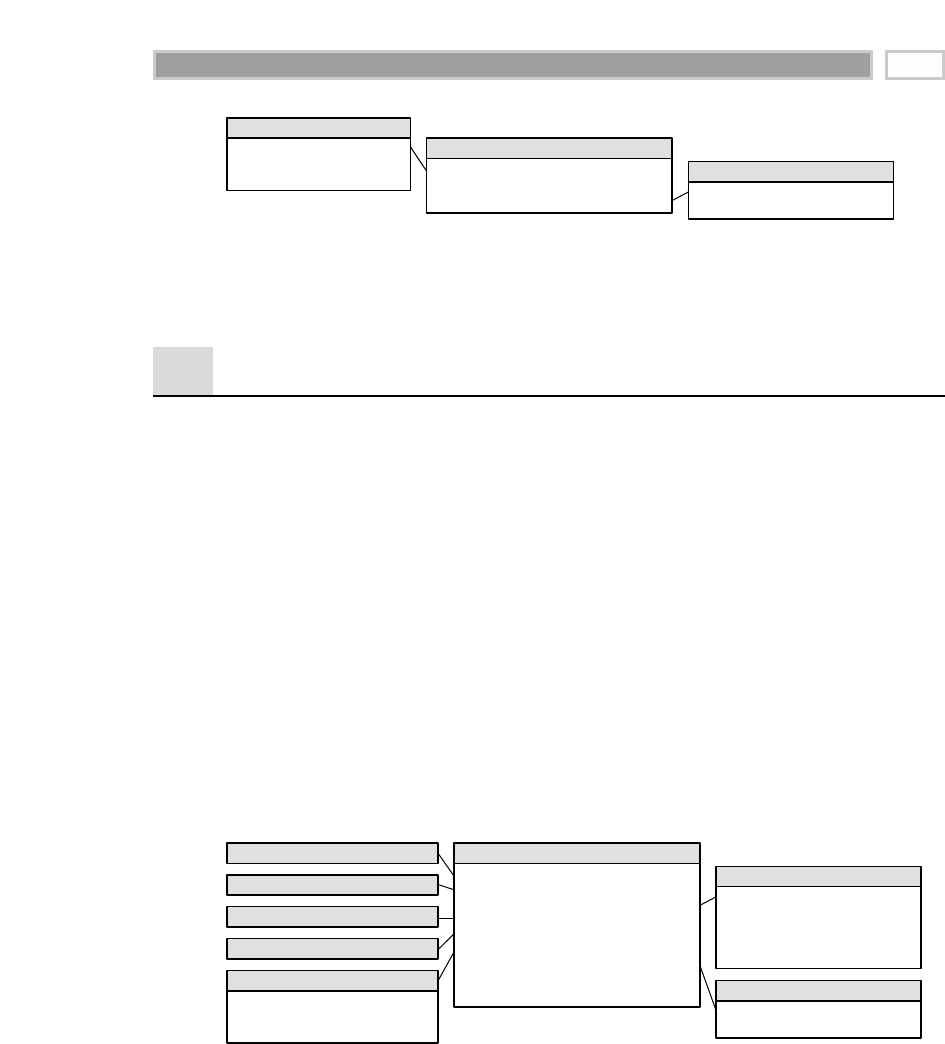

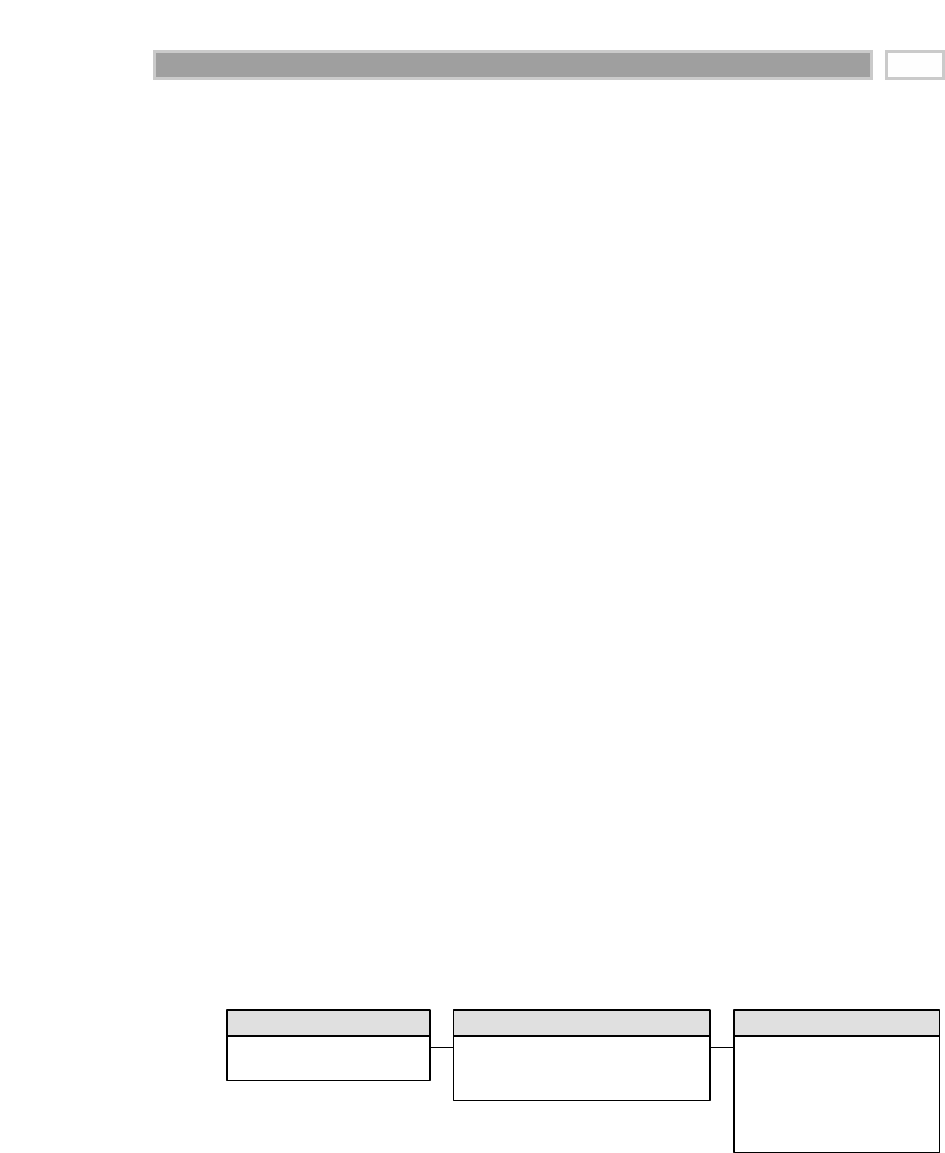

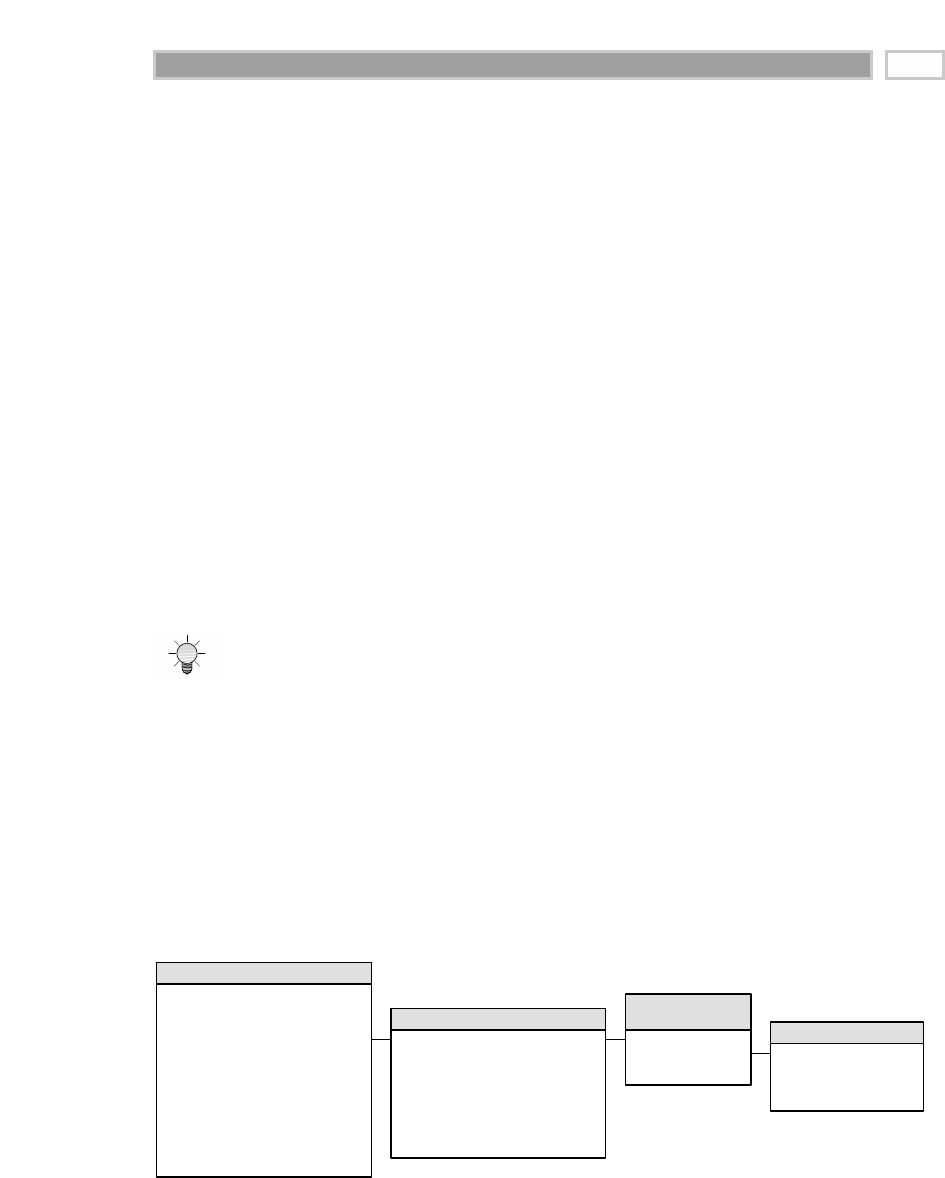

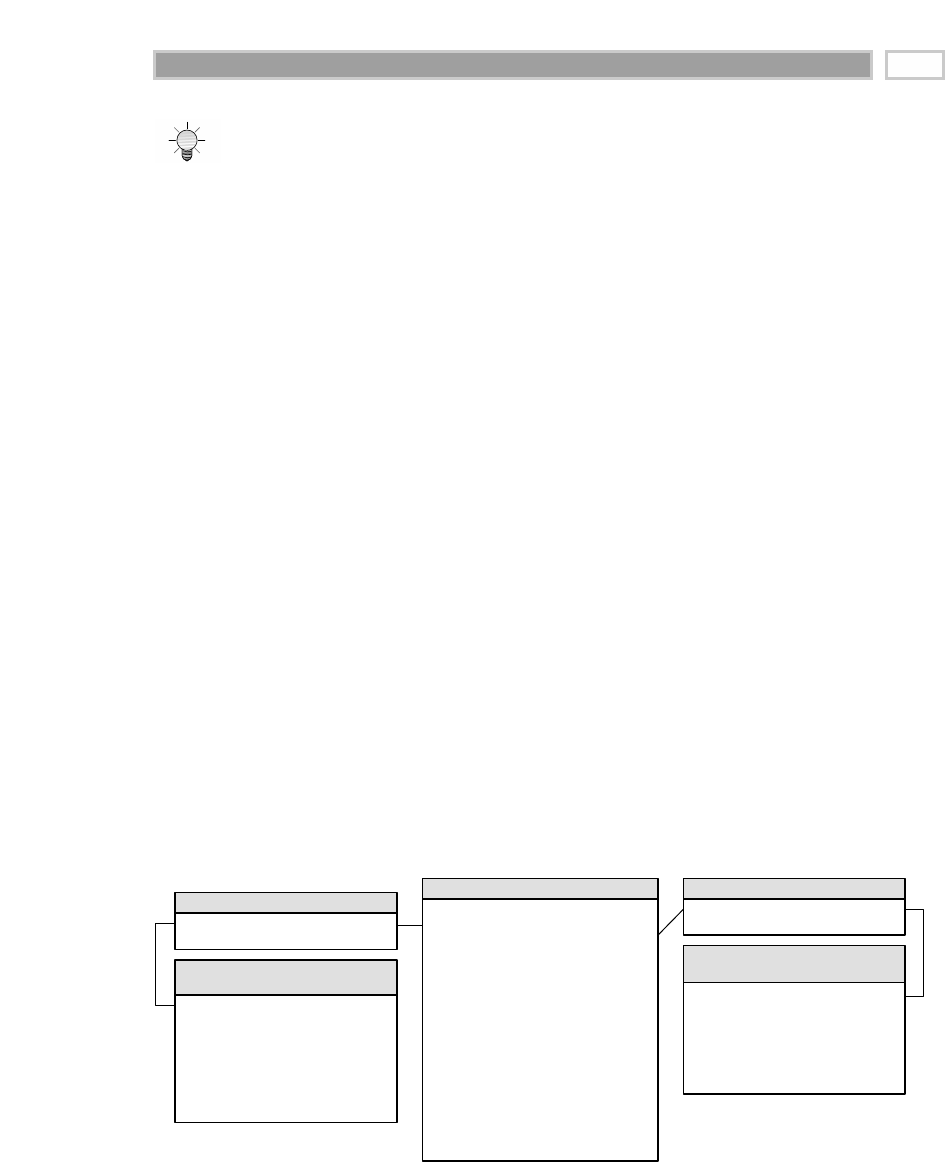

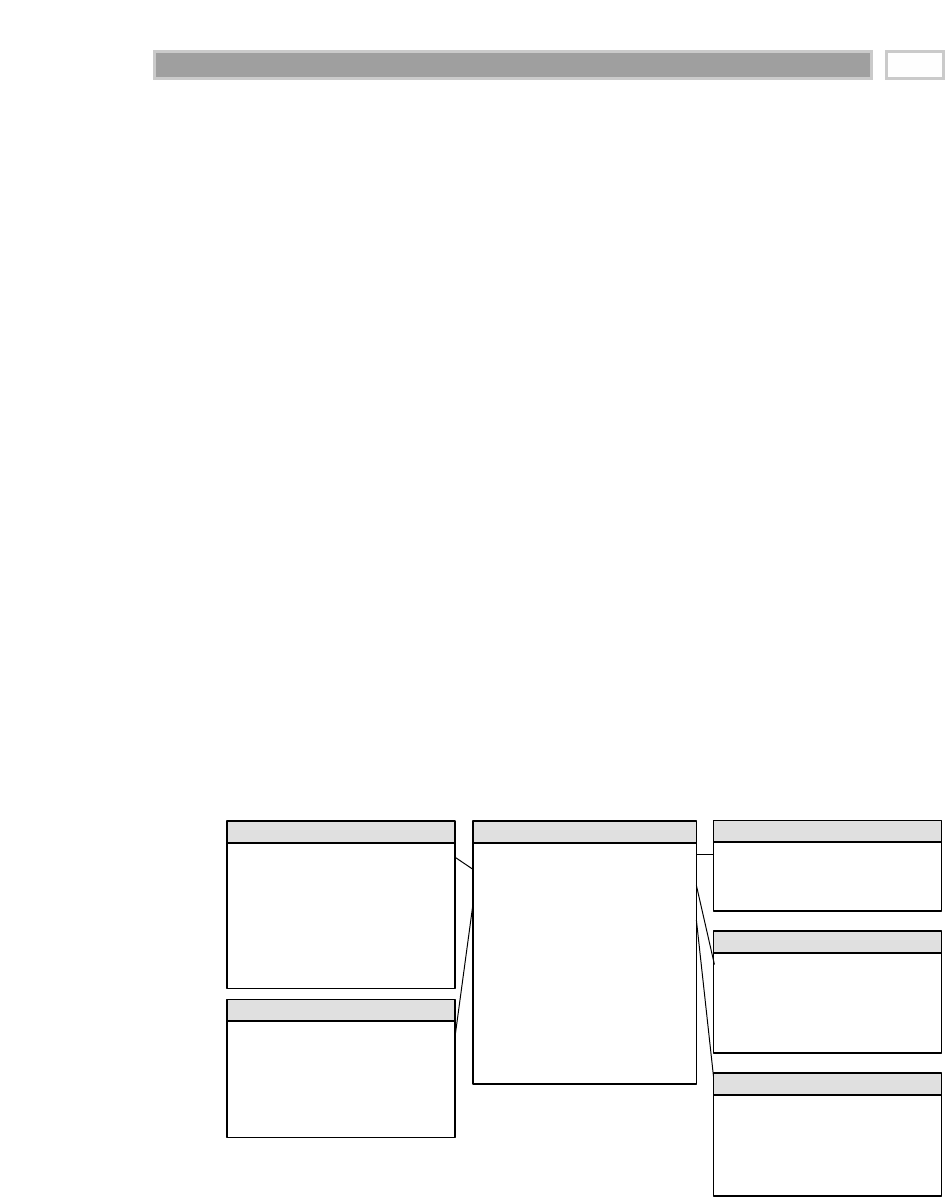

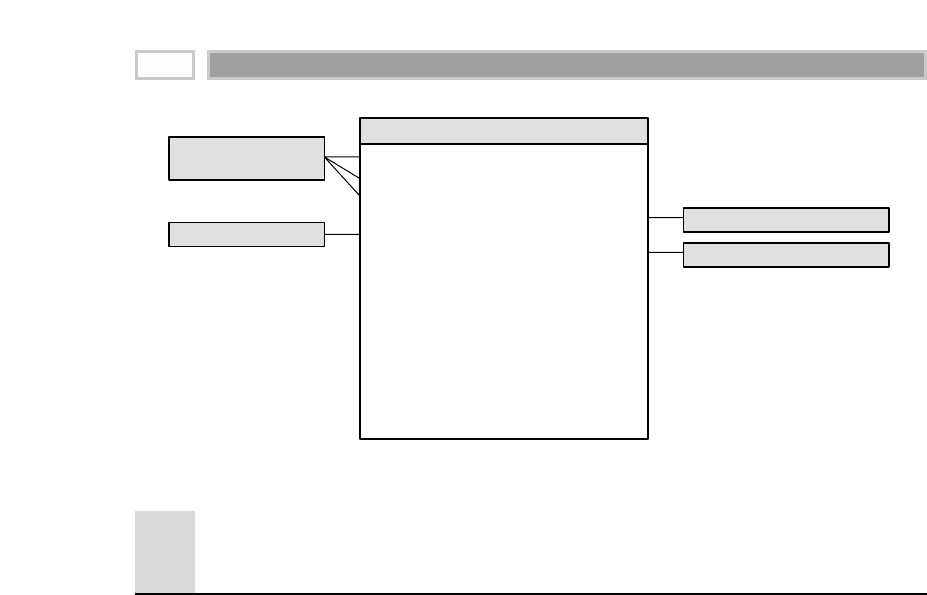

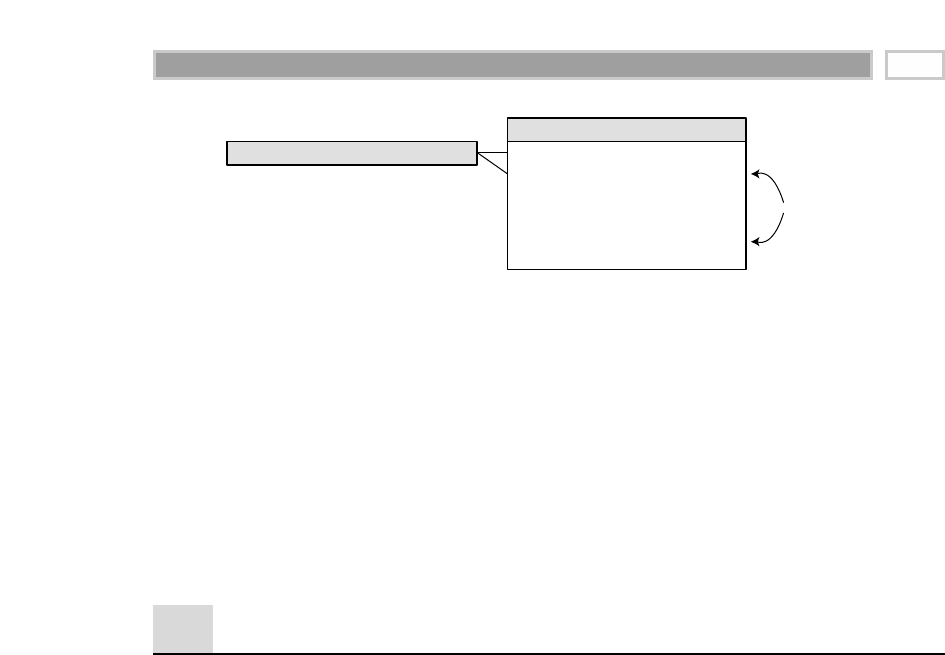

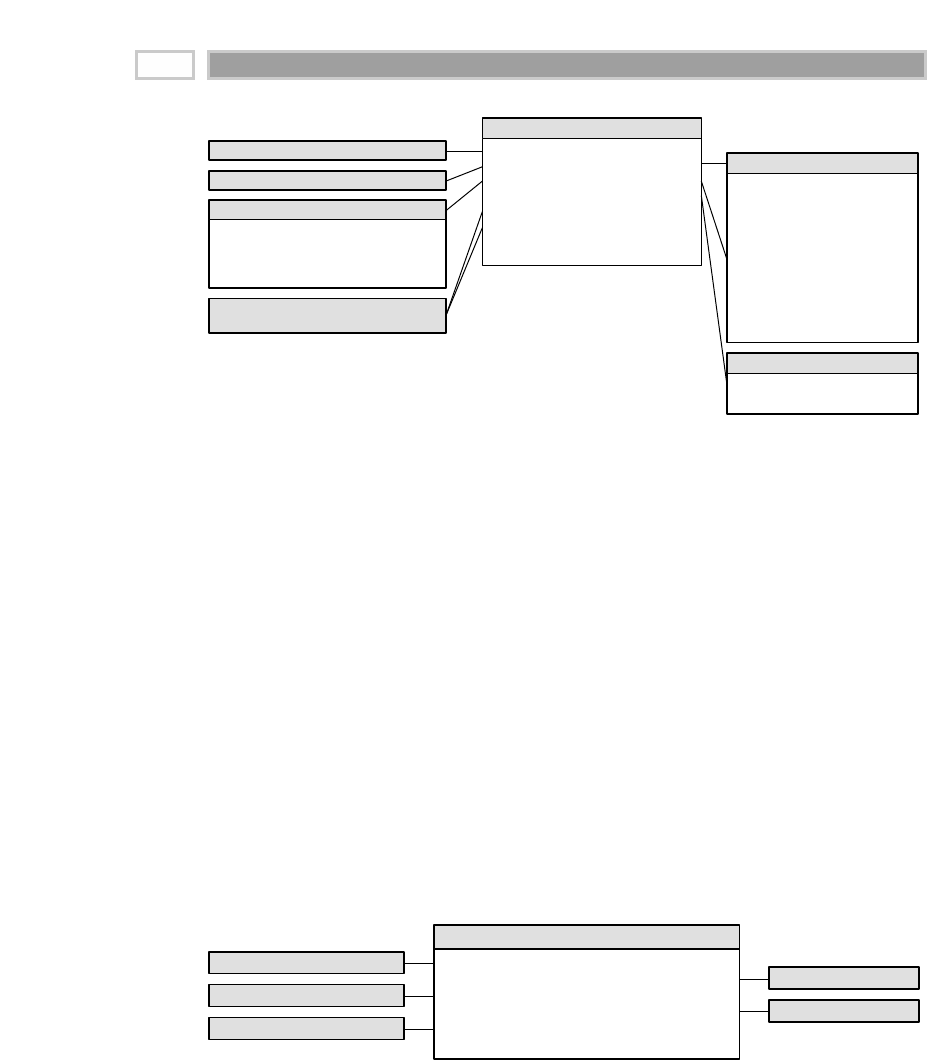

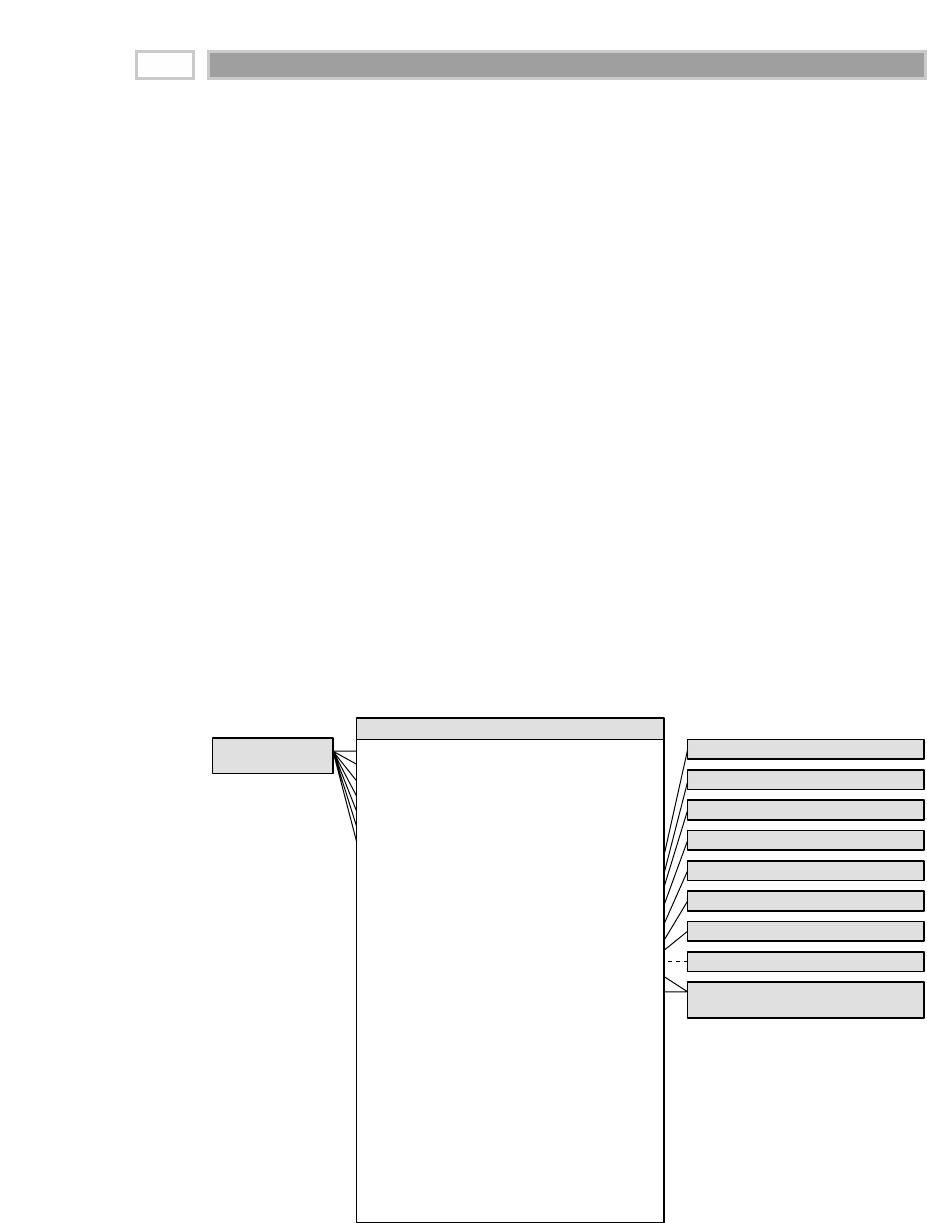

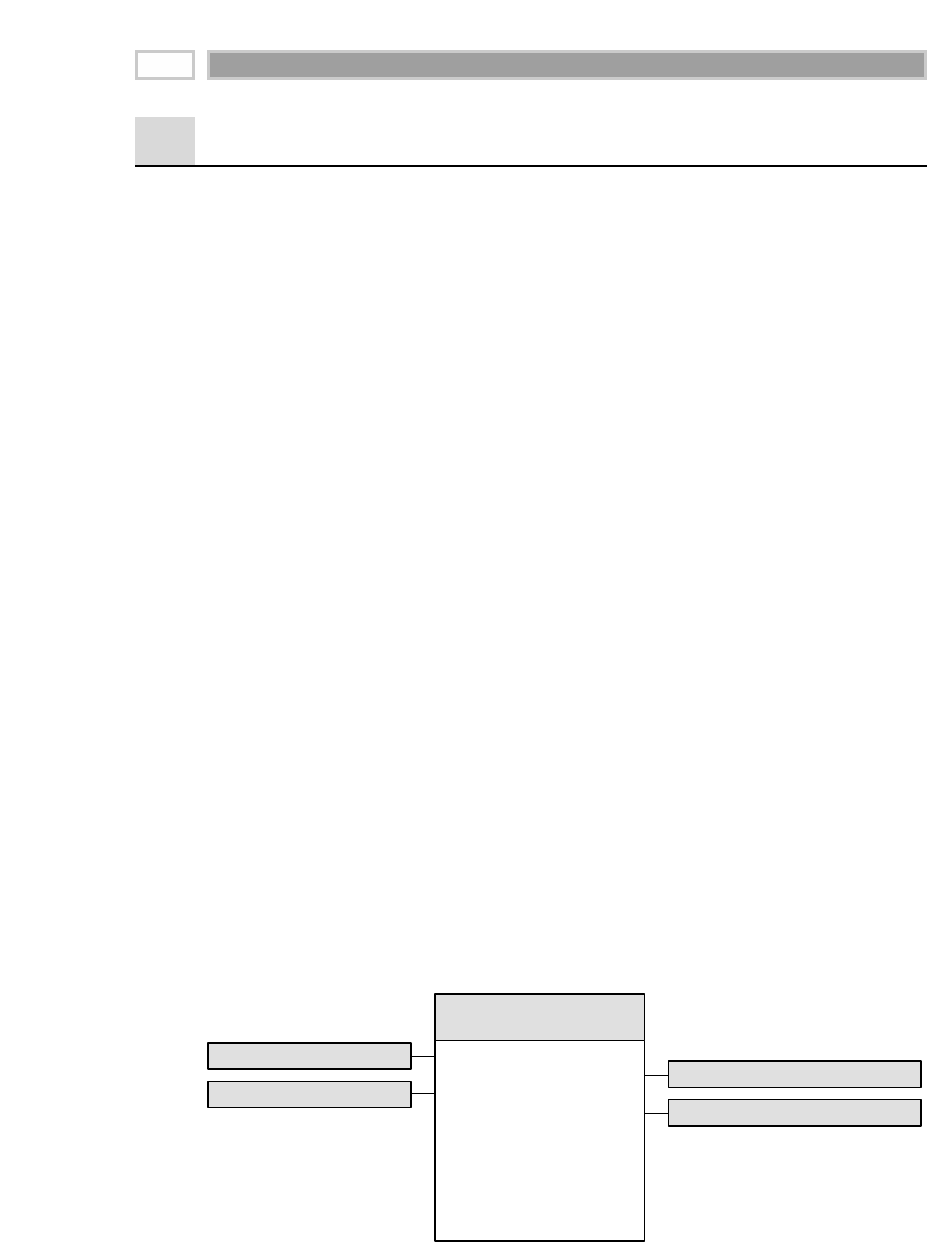

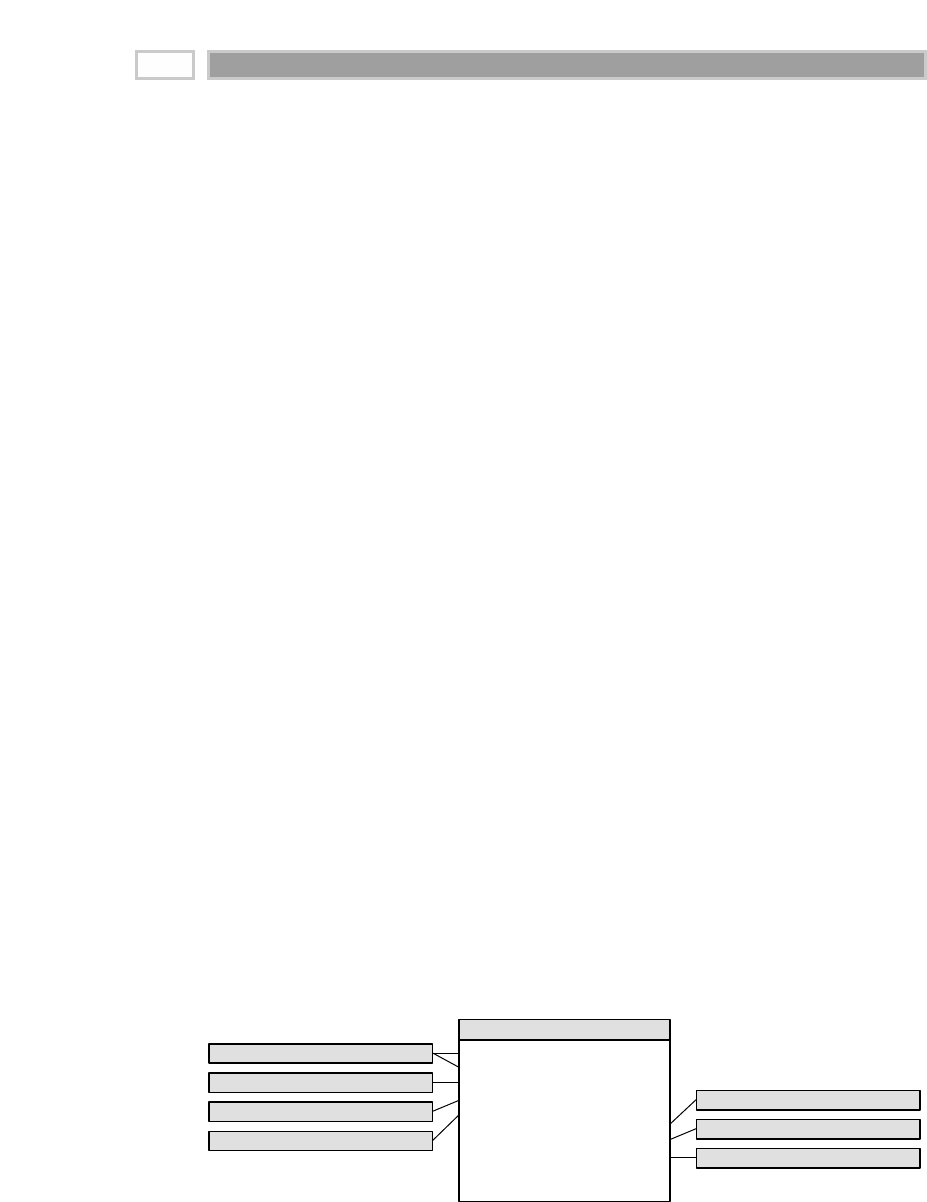

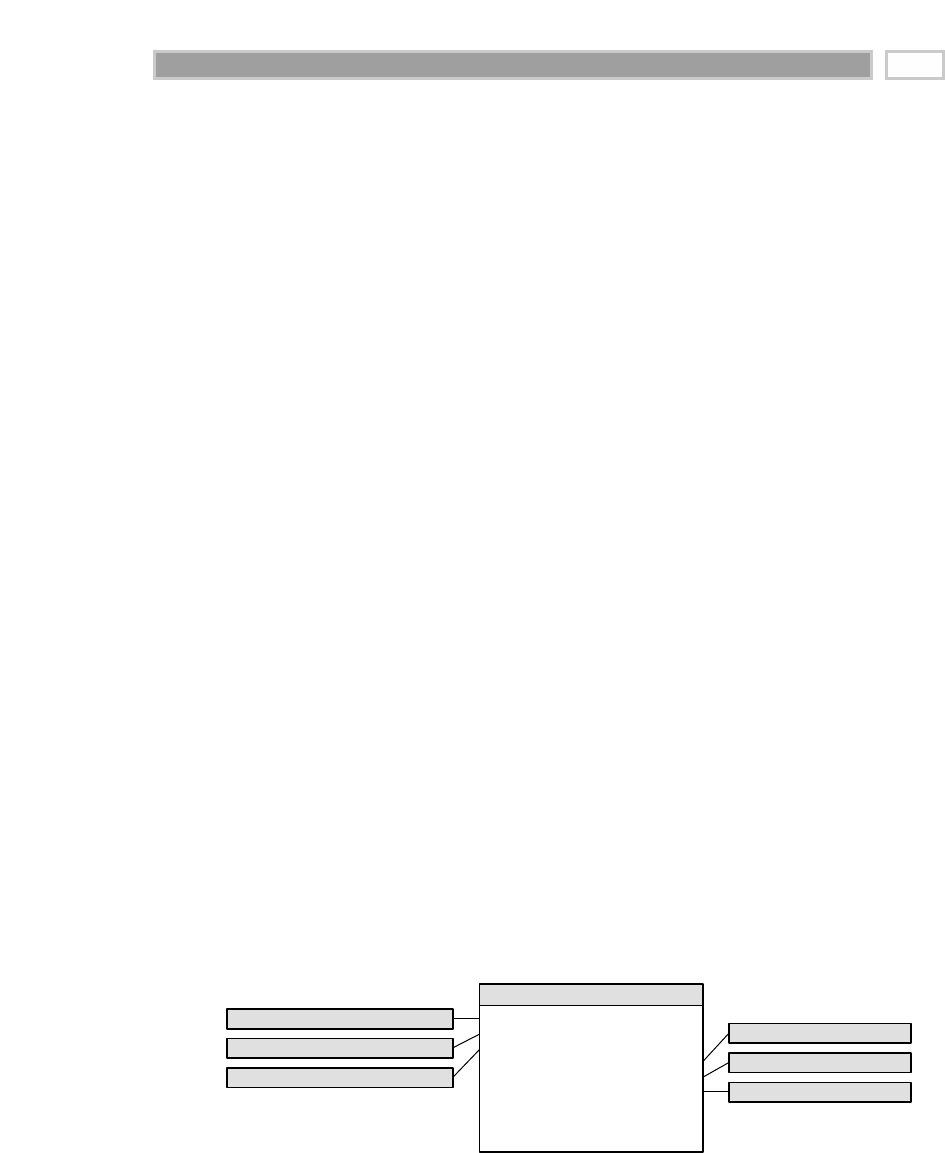

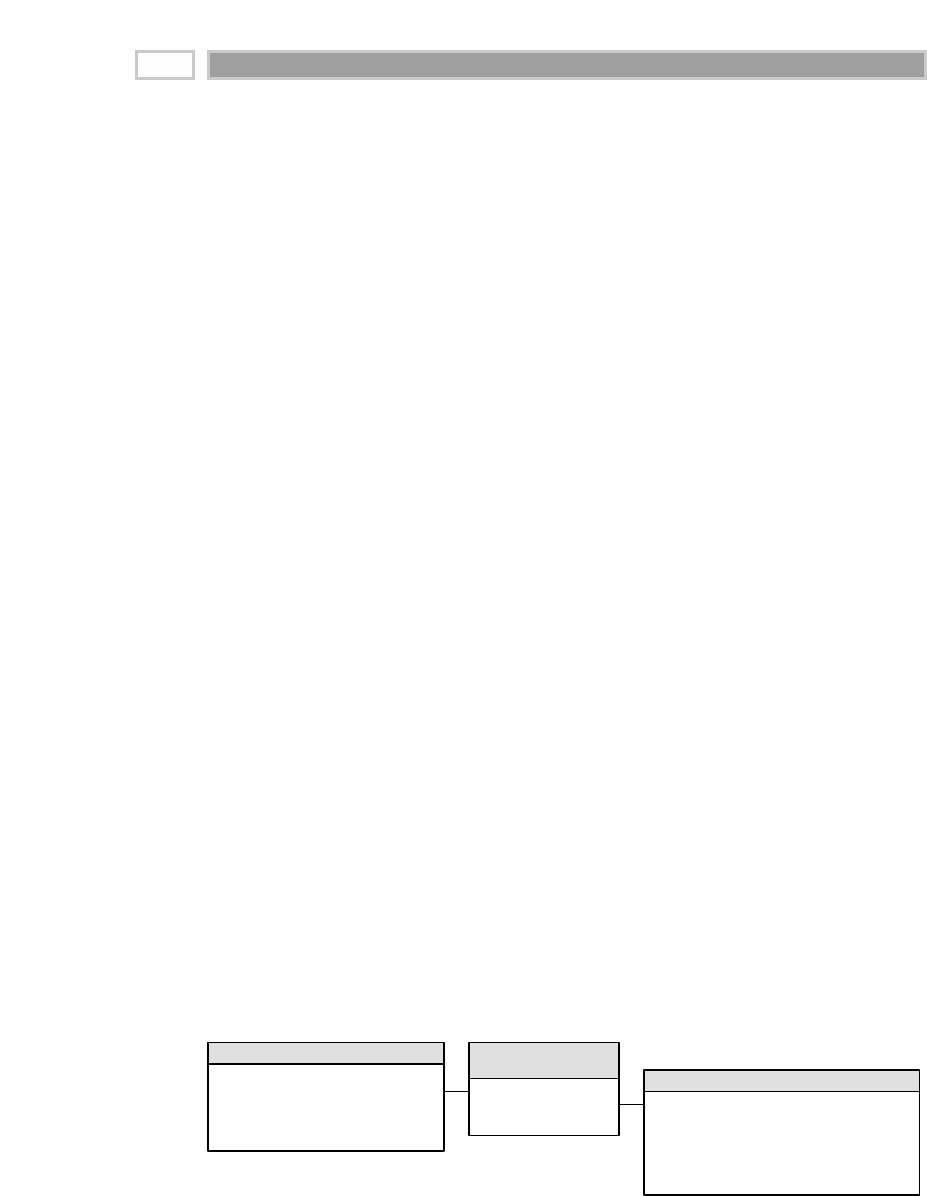

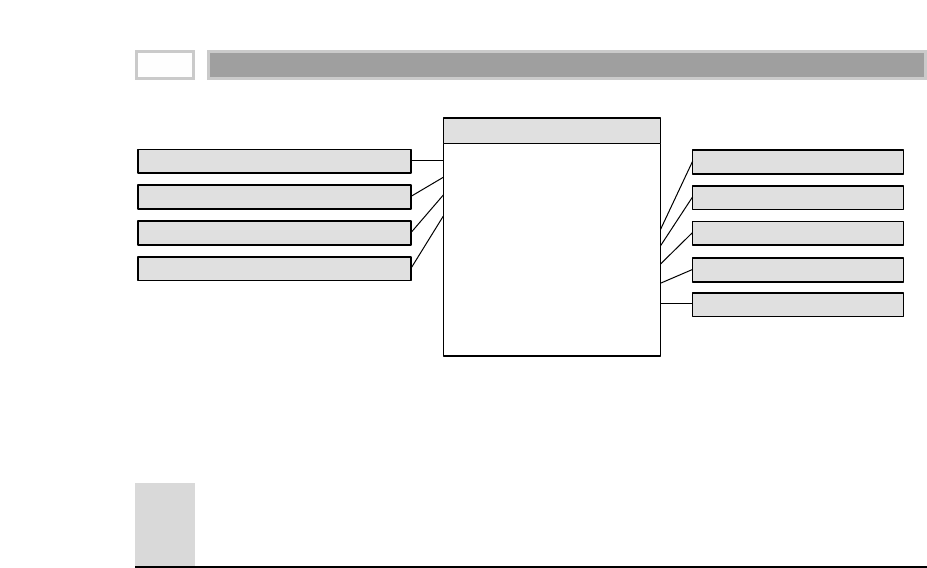

Fact Table

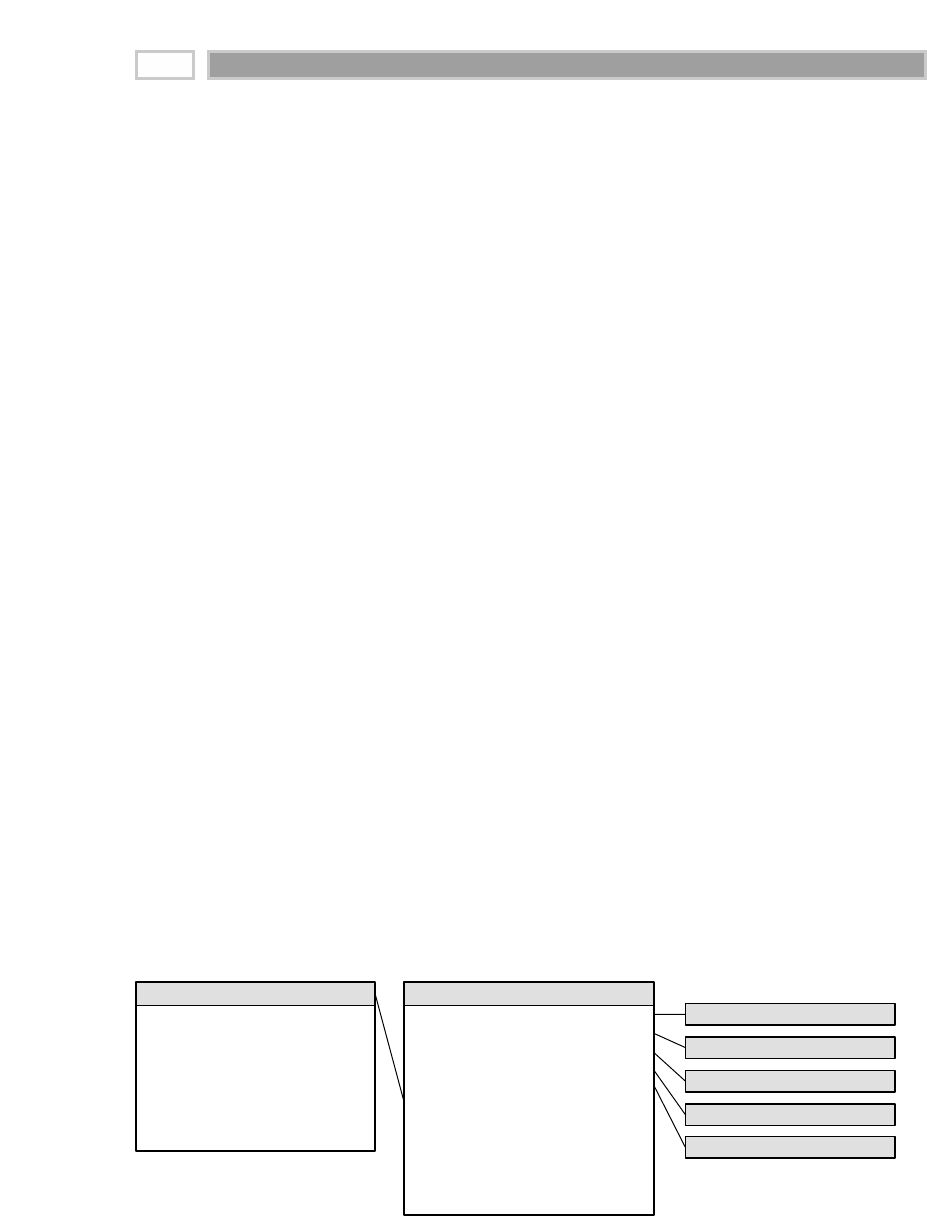

A fact table is the primary table in a dimensional model where the numerical

performance measurements of the business are stored, as illustrated in Figure

1.2. We strive to store the measurement data resulting from a business process

in a single data mart. Since measurement data is overwhelmingly the largest

part of any data mart, we avoid duplicating it in multiple places around the

enterprise.

CHAPTER 1

16

TEAMFLY

Team-Fly®

Figure 1.2 Sample fact table.

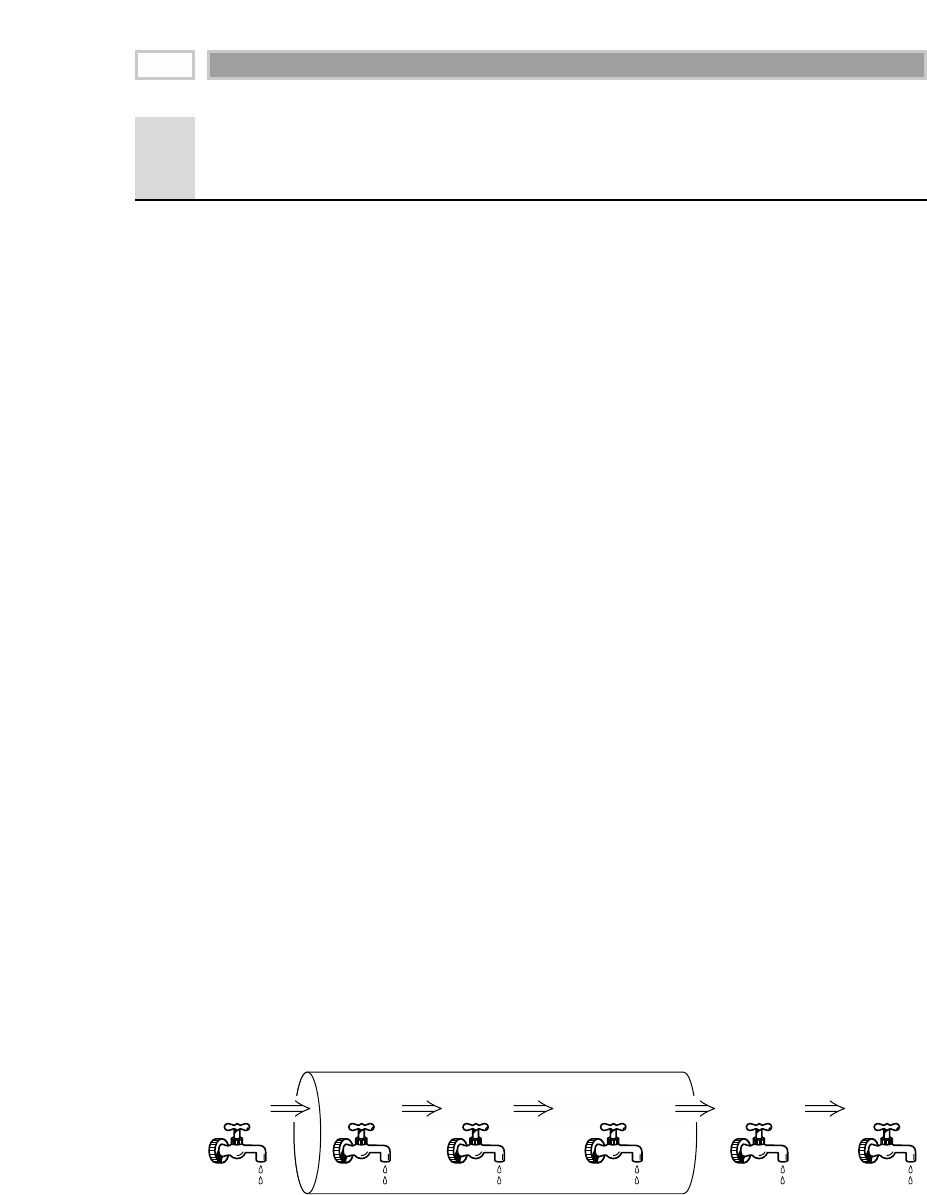

We use the term fact to represent a business measure. We can imagine standing

in the marketplace watching products being sold and writing down the quan-