Linux Administration II//Linux As A Network Client Adm2 En Manual

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 217 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents

- List of Tables

- List of Figures

- Preface

- System Logging

- System Logging with Systemd and ``The Journal''

- TCP/IP Fundamentals

- Linux Network Configuration

- Network Troubleshooting

- *inetd and *xinetd

- Network services with systemd

- System Time

- Printing on Linux

- The Secure Shell

- Electronic Mail

- Introduction to GnuPG

- Linux and Security: An Introduction

- Sample Solutions

- LPIC-1 Certification

- Command Index

- Index

Version 4.0

Linux Administration II

Linux as a Network Client

$ echo tux

tux

$ ls

hallo.c

hallo.o

$ /bin/su -

Password:

tuxcademy – Linux and Open Source learning materials for everyone

www.tuxcademy.org ⋅info@tuxcademy.org

This training manual is designed to correspond to the objectives of the LPI-102 (LPIC-1, version

4.0) certification exam promulgated by the Linux Professional Institute. Further details are

available in Appendix B.

The Linux Professional Institute does not endorse specific exam preparation materials or tech-

niques. For details, refer to

info@lpi.org

.

The tuxcademy project aims to supply freely available high-quality training materials on

Linux and Open Source topics – for self-study, school, higher and continuing education

and professional training.

Please visit

http://www.tuxcademy.org/

! Do contact us with questions or suggestions.

Linux Administration II Linux as a Network Client

Revision:

adm2:0cd011e4d0e3d9e9:2015-08-21

adm2:0cd20ee1646f650c:2015-08-21

1–13, B

adm2:D6IMdRN77OjUKOKAMJE2Cq

© 2015 Linup Front GmbH Darmstadt, Germany

© 2015 tuxcademy (Anselm Lingnau) Darmstadt, Germany

http://www.tuxcademy.org

⋅

info@tuxcademy.org

Linux penguin “Tux” © Larry Ewing (CC-BY licence)

All representations and information contained in this document have been com-

piled to the best of our knowledge and carefully tested. However, mistakes cannot

be ruled out completely. To the extent of applicable law, the authors and the tux-

cademy project assume no responsibility or liability resulting in any way from the

use of this material or parts of it or from any violation of the rights of third parties.

Reproduction of trade marks, service marks and similar monikers in this docu-

ment, even if not specially marked, does not imply the stipulation that these may

be freely usable according to trade mark protection laws. All trade marks are used

without a warranty of free usability and may be registered trade marks of third

parties.

This document is published under the “Creative Commons-BY-SA 4.0 Interna-

tional” licence. You may copy and distribute it and make it publically available as

long as the following conditions are met:

Attribution You must make clear that this document is a product of the tux-

cademy project.

Share-Alike You may alter, remix, extend, or translate this document or modify

or build on it in other ways, as long as you make your contributions available

under the same licence as the original.

Further information and the full legal license grant may be found at

http://creativecommons.org/licenses/by-sa/4.0/

Authors: Anselm Lingnau, Tobias Elsner

Technical Editor: Anselm Lingnau ⟨

anselm@tuxcademy.org

⟩

English Translation: Anselm Lingnau

Typeset in Palatino, Optima and DejaVu Sans Mono

$ echo tux

tux

$ ls

hallo.c

hallo.o

$ /bin/su -

Password:

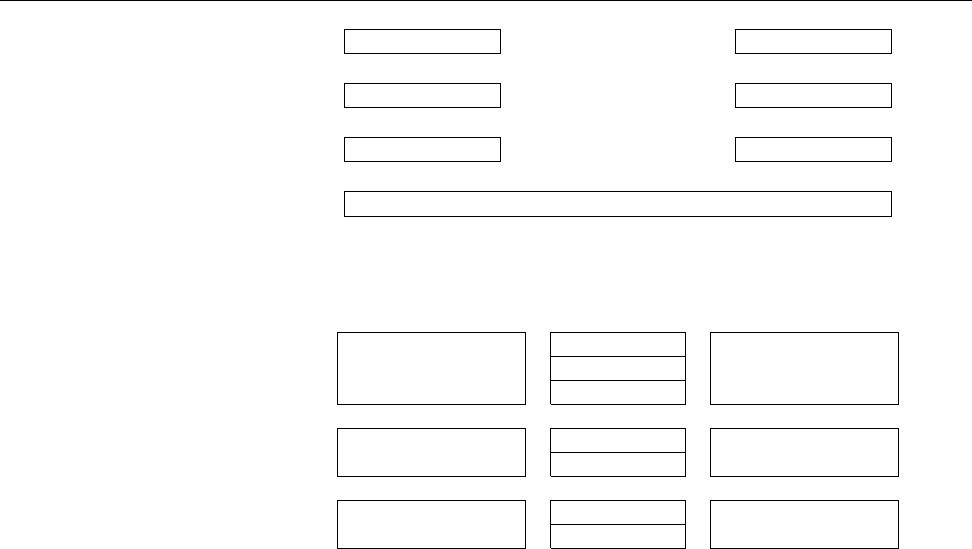

Contents

1 System Logging 13

1.1 The Problem . . . . . . . . . . . . . . . . . . . . . 14

1.2 The Syslog Daemon . . . . . . . . . . . . . . . . . . . 14

1.3 Log Files . . . . . . . . . . . . . . . . . . . . . . . 17

1.4 Kernel Logging . . . . . . . . . . . . . . . . . . . . 18

1.5 Extended Possibilities: Rsyslog . . . . . . . . . . . . . . . 18

1.6 The “next generation”: Syslog-NG. . . . . . . . . . . . . . 22

1.7 The

logrotate

Program..................26

2 System Logging with Systemd and “The Journal” 31

2.1 Fundamentals . . . . . . . . . . . . . . . . . . . . . 32

2.2 Systemd and journald . . . . . . . . . . . . . . . . . . 33

2.3 Log Inspection . . . . . . . . . . . . . . . . . . . . . 35

3 TCP/IP Fundamentals 41

3.1 History and Introduction . . . . . . . . . . . . . . . . . 42

3.1.1 The History of the Internet . . . . . . . . . . . . . . 42

3.1.2 Internet Administration . . . . . . . . . . . . . . . 42

3.2 Technology . . . . . . . . . . . . . . . . . . . . . . 44

3.2.1 Overview . . . . . . . . . . . . . . . . . . . . 44

3.2.2 Protocols . . . . . . . . . . . . . . . . . . . . . 45

3.3 TCP/IP.......................47

3.3.1 Overview . . . . . . . . . . . . . . . . . . . . 47

3.3.2 End-to-End Communication: IP and ICMP . . . . . . . . 48

3.3.3 The Base for Services: TCP and UDP . . . . . . . . . . . 51

3.3.4 The Most Important Application Protocols. . . . . . . . . 54

3.4 Addressing, Routing and Subnetting . . . . . . . . . . . . . 56

3.4.1 Basics . . . . . . . . . . . . . . . . . . . . . . 56

3.4.2 Routing . . . . . . . . . . . . . . . . . . . . . 57

3.4.3 IP Network Classes . . . . . . . . . . . . . . . . . 58

3.4.4 Subnetting . . . . . . . . . . . . . . . . . . . . 58

3.4.5 Private IP Addresses . . . . . . . . . . . . . . . . 59

3.4.6 Masquerading and Port Forwarding . . . . . . . . . . . 60

3.5 IPv6.........................61

3.5.1 IPv6 Addressing . . . . . . . . . . . . . . . . . . 62

4 Linux Network Configuration 67

4.1 Network Interfaces . . . . . . . . . . . . . . . . . . . 68

4.1.1 Hardware and Drivers . . . . . . . . . . . . . . . . 68

4.1.2 Configuring Network Adapters Using

ifconfig

.......69

4.1.3 Configuring Routing Using

route

............70

4.1.4 Configuring Network Settings Using

ip

..........72

4.2 Persistent Network Configuration . . . . . . . . . . . . . . 73

4.3 DHCP........................76

4.4 IPv6 Configuration . . . . . . . . . . . . . . . . . . . 77

4.5 Name Resolution and DNS . . . . . . . . . . . . . . . . 78

4 Contents

5 Network Troubleshooting 83

5.1 Introduction. . . . . . . . . . . . . . . . . . . . . . 84

5.2 Local Problems. . . . . . . . . . . . . . . . . . . . . 84

5.3 Checking Connectivity With

ping

..............84

5.4 Checking Routing Using

traceroute

And

tracepath

........87

5.5 Checking Services With

netstat

And

nmap

...........90

5.6 Testing DNS With

host

And

dig

...............93

5.7 Other Useful Tools For Diagnosis . . . . . . . . . . . . . . 95

5.7.1

telnet

and

netcat

..................95

5.7.2

tcpdump

......................97

5.7.3

wireshark

.....................97

6

inetd

and

xinetd

99

6.1 Offering Network Services with

inetd

.............100

6.1.1 Overview . . . . . . . . . . . . . . . . . . . . 100

6.1.2

inetd

Configuration . . . . . . . . . . . . . . . . . 100

6.2 The TCP Wrapper—

tcpd

.................101

6.3

xinetd

........................104

6.3.1 Overview . . . . . . . . . . . . . . . . . . . . 104

6.3.2

xinetd

Configuration. . . . . . . . . . . . . . . . . 104

6.3.3 Launching

xinetd

..................105

6.3.4 Parallel Processing of Requests . . . . . . . . . . . . . 106

6.3.5 Replacing

inetd

by

xinetd

...............106

7 Network services with systemd 109

7.1 Introductory Remarks . . . . . . . . . . . . . . . . . . 110

7.2 Persistent Network Services . . . . . . . . . . . . . . . . 110

7.3 Socket Activation . . . . . . . . . . . . . . . . . . . . 112

8 System Time 117

8.1 Introduction. . . . . . . . . . . . . . . . . . . . . . 118

8.2 Clocks and Time on Linux. . . . . . . . . . . . . . . . . 118

8.3 Time Synchronisation with NTP . . . . . . . . . . . . . . 120

9 Printing on Linux 127

9.1 Overview. . . . . . . . . . . . . . . . . . . . . . . 128

9.2 Commands for Printing . . . . . . . . . . . . . . . . . 129

9.3 CUPS Configuration. . . . . . . . . . . . . . . . . . . 133

9.3.1 Basics . . . . . . . . . . . . . . . . . . . . . . 133

9.3.2 Installing and Configuring a CUPS Server . . . . . . . . . 135

9.3.3 Miscellaneous Hints . . . . . . . . . . . . . . . . . 139

10 The Secure Shell 141

10.1 Introduction. . . . . . . . . . . . . . . . . . . . . . 142

10.2 Logging Into Remote Hosts Using

ssh

............142

10.3 Other Useful Applications:

scp

and

sftp

............145

10.4 Public-Key Client Authentication . . . . . . . . . . . . . . 146

10.5 Port Forwarding Using SSH . . . . . . . . . . . . . . . . 148

10.5.1 X11 Forwarding . . . . . . . . . . . . . . . . . . 148

10.5.2 Forwarding Arbitrary TCP Ports . . . . . . . . . . . . 149

11 Electronic Mail 153

11.1 Fundamentals . . . . . . . . . . . . . . . . . . . . . 154

11.2 MTAs for Linux . . . . . . . . . . . . . . . . . . . . 154

11.3 Basic Functionality . . . . . . . . . . . . . . . . . . . 155

11.4 Managing The Mail Queue . . . . . . . . . . . . . . . . 156

11.5 Local Delivery, Aliases And User-Specific Forwarding . . . . . . 156

5

12 Introduction to GnuPG 159

12.1 Asymmetric Cryptography and the “Web of Trust” . . . . . . . 160

12.2 Generating and Managing GnuPG Keys. . . . . . . . . . . . 163

12.2.1 Generating Key Pairs . . . . . . . . . . . . . . . . 163

12.2.2 Publishing a Public Key . . . . . . . . . . . . . . . 165

12.2.3 Importing and Signing Public Keys . . . . . . . . . . . 166

12.3 Encrypting and Decrypting Data . . . . . . . . . . . . . . 169

12.4 Signing Files and Verifying Signatures . . . . . . . . . . . . 171

12.5 GnuPG Configuration . . . . . . . . . . . . . . . . . . 173

13 Linux and Security: An Introduction 175

13.1 Introduction. . . . . . . . . . . . . . . . . . . . . . 176

13.2 File System Security . . . . . . . . . . . . . . . . . . . 176

13.3 Users and Files. . . . . . . . . . . . . . . . . . . . . 179

13.4 Resource Limits . . . . . . . . . . . . . . . . . . . . 182

13.5 Administrator Privileges With

sudo

..............186

13.6 Basic Networking Security . . . . . . . . . . . . . . . . 190

A Sample Solutions 193

B LPIC-1 Certification 203

B.1 Overview. . . . . . . . . . . . . . . . . . . . . . . 203

B.2 Exam LPI-102 . . . . . . . . . . . . . . . . . . . . . 203

B.3 LPI Objectives In This Manual . . . . . . . . . . . . . . . 204

C Command Index 211

Index 213

$ echo tux

tux

$ ls

hallo.c

hallo.o

$ /bin/su -

Password:

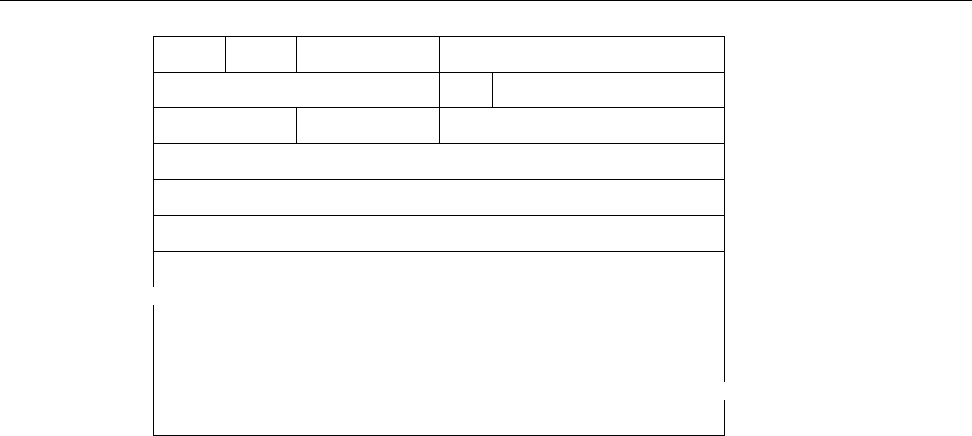

List of Tables

1.1

syslogd

facilities .............................. 15

1.2

syslogd

priorities (with ascending urgency) . . . . . . . . . . . . . . 15

1.3 Filtering functions for Syslog-NG . . . . . . . . . . . . . . . . . . . . 24

3.1 Common application protocols based on TCP/IP . . . . . . . . . . . 55

3.2 Addressingexample ........................... 57

3.3 Traditional IP Network Classes . . . . . . . . . . . . . . . . . . . . . 58

3.4 SubnettingExample............................ 59

3.5 Private IP address ranges according to RFC 1918 . . . . . . . . . . . 59

4.1 Options within

/etc/resolv.conf

..................... 79

5.1 Important

ping

options .......................... 86

6.1 Text substitutions in command entries in

/etc/hosts.allow

and

/etc/

hosts.deny

..................................102

6.2 Attributes in the

/etc/xinetd.conf

file ..................105

6.3

xinetd

andsignals .............................106

13.1 Access codes for processes with

fuser

..................181

$ echo tux

tux

$ ls

hallo.c

hallo.o

$ /bin/su -

Password:

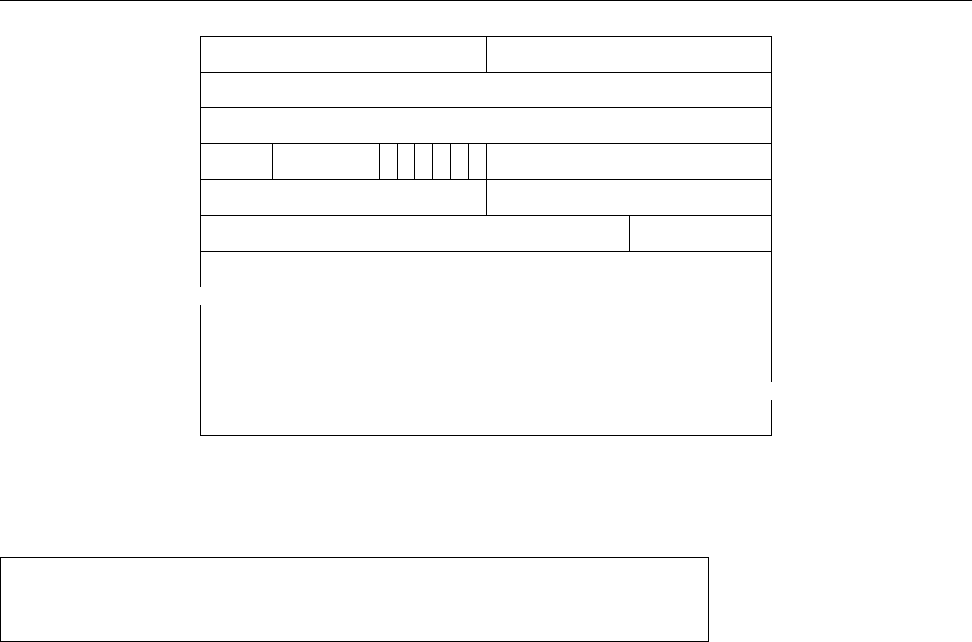

List of Figures

1.1 Example configuration for

logrotate

(Debian GNU/Linux 8.0) . . . 27

2.1 Complete log output of

journalctl

.................... 38

3.1 Protocols and service interfaces . . . . . . . . . . . . . . . . . . . . . 46

3.2 ISO/OSI reference model . . . . . . . . . . . . . . . . . . . . . . . . 46

3.3 Structure of an IP datagram . . . . . . . . . . . . . . . . . . . . . . . 49

3.4 Structure of an ICMP packet . . . . . . . . . . . . . . . . . . . . . . . 50

3.5 Structure of a TCP Segment . . . . . . . . . . . . . . . . . . . . . . . 51

3.6 Starting a TCP connection: The Three-Way Handshake . . . . . . . 52

3.7 Structure of a UDP datagram . . . . . . . . . . . . . . . . . . . . . . 53

3.8 The

/etc/services

file(excerpt)...................... 54

4.1

/etc/resolv.conf

example ......................... 79

4.2 The

/etc/hosts

file(SUSE)......................... 80

7.1 Unit file for Secure Shell daemon (Debian 8) . . . . . . . . . . . . . . 114

9.1 The

mime.types

file(excerpt)........................133

9.2 The

/etc/cups/mime.convs

file(excerpt)..................134

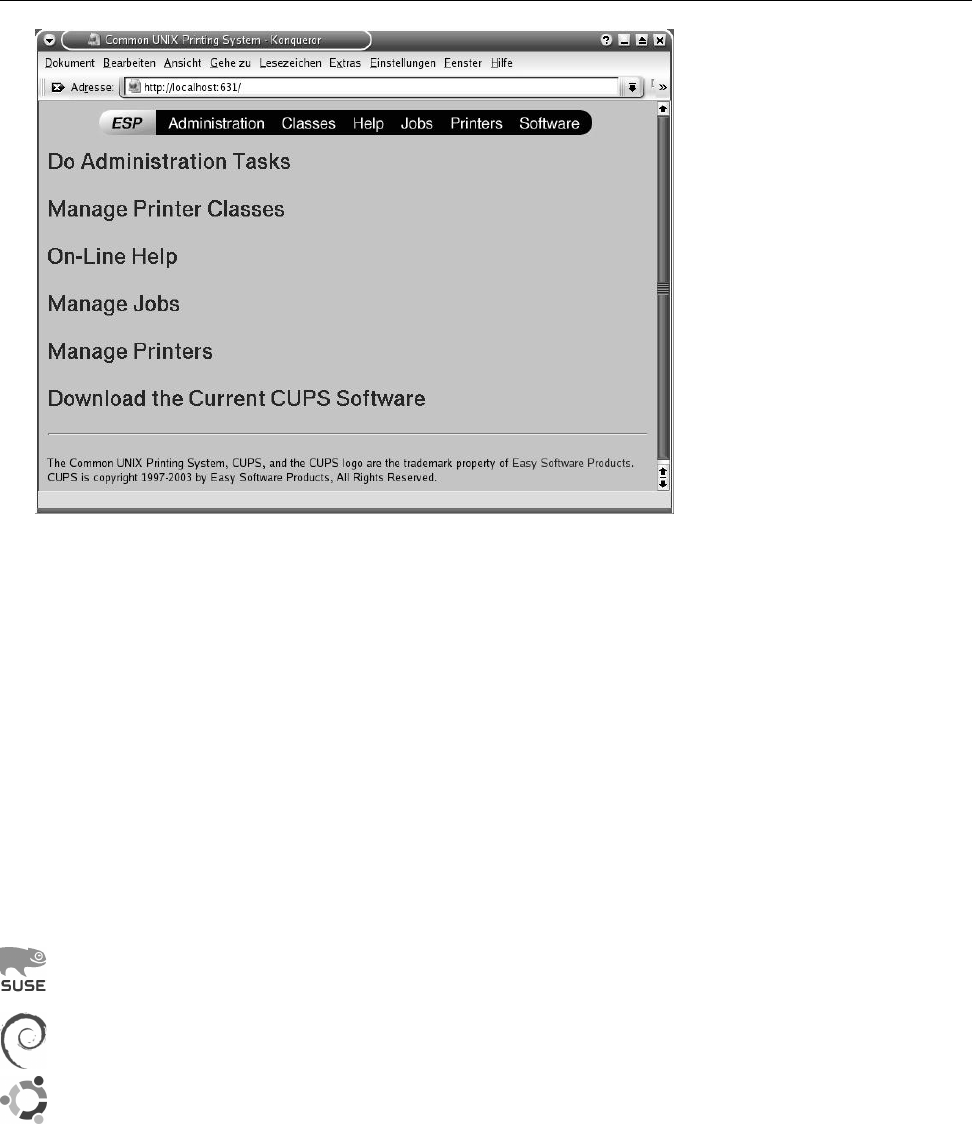

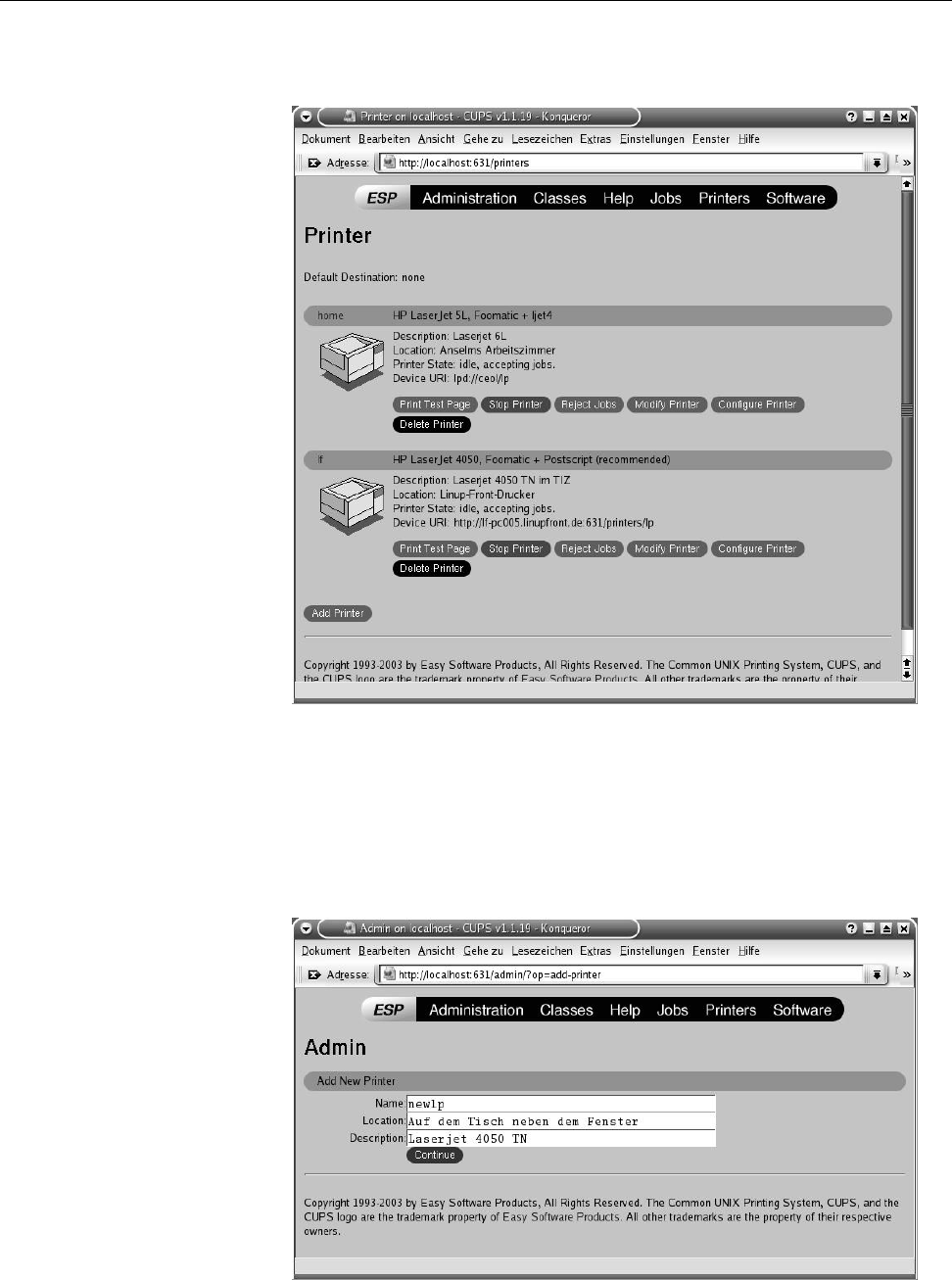

9.3 The CUPS web interface . . . . . . . . . . . . . . . . . . . . . . . . . 135

9.4 The CUPS web interface: Printer management . . . . . . . . . . . . 136

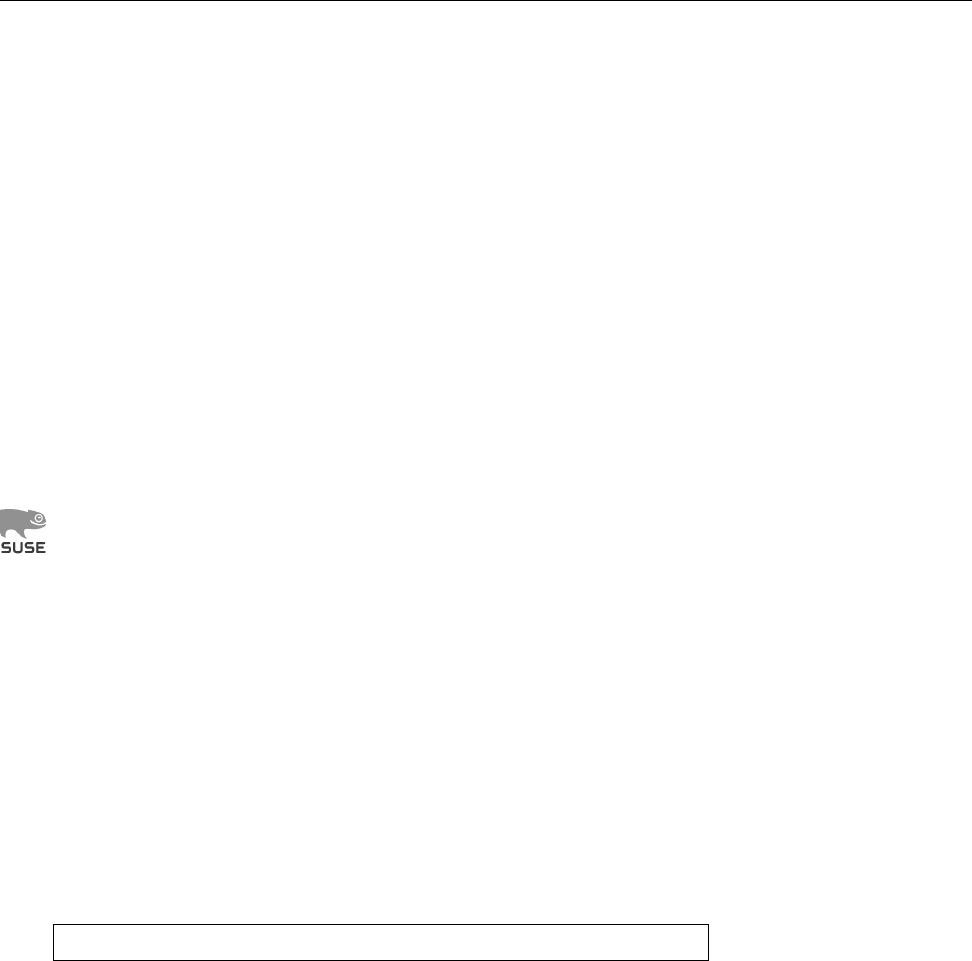

9.5 The CUPS web interface: Adding a printer . . . . . . . . . . . . . . 136

9.6 An

/etc/cups/printers.conf

file (excerpt) . . . . . . . . . . . . . . . . . 138

$ echo tux

tux

$ ls

hallo.c

hallo.o

$ /bin/su -

Password:

Preface

This training manual deals with the knowledge necessary to configure and oper-

ate a Linux workstation as part of an (existing) local area network.

It is aimed towards advanced Linux administrators and presumes knowledge

on a level tested in the LPI-101 exam. This includes solid experience using the

shell, a text editor, and the fundamental Linux commands as well as the basics

of Linux administration. In addition, this training manual builds on the manual

Advanced Linux, which covers topics such as shell programming,

sed

and

awk

,

cron

,

and

at

.

After an introduction to the system logging service, the fundamentals of

TCP/IP and Linux network configuration, this manual covers the details of net-

work troubleshooting and explains how to start services using

inetd

and

xinetd

. In

addition, we cover topics like managing the system time, printing and important

network services such as the secure shell and connecting a client to a mail server.

The manual closes with an introduction to encrypting files using GnuPG and an

overview of Linux security.

The successful completion of this manual or comparable knowledge are a pre-

requisite for making the most of additional Linux courses and for obtaining Linux

Professional Institute certification.

This courseware package is designed to support the training course as effi-

ciently as possible, by presenting the material in a dense, extensive format for

reading along, revision or preparation. The material is divided in self-contained

chapters detailing a part of the curriculum; a chapter’s goals and prerequisites chapters

goals

prerequisites

are summarized clearly at its beginning, while at the end there is a summary and

(where appropriate) pointers to additional literature or web pages with further

information.

BAdditional material or background information is marked by the “light-

bulb” icon at the beginning of a paragraph. Occasionally these paragraphs

make use of concepts that are really explained only later in the courseware,

in order to establish a broader context of the material just introduced; these

“lightbulb” paragraphs may be fully understandable only when the course-

ware package is perused for a second time after the actual course.

AParagraphs with the “caution sign” direct your attention to possible prob-

lems or issues requiring particular care. Watch out for the dangerous bends!

CMost chapters also contain exercises, which are marked with a “pencil” icon exercises

at the beginning of each paragraph. The exercises are numbered, and sam-

ple solutions for the most important ones are given at the end of the course-

ware package. Each exercise features a level of difficulty in brackets. Exer-

cises marked with an exclamation point (“!”) are especially recommended.

Excerpts from configuration files, command examples and examples of com-

puter output appear in

typewriter type

. In multiline dialogs between the user and

the computer, user input is given in

bold typewriter type

in order to avoid misun-

derstandings. The “” symbol appears where part of a command’s output

had to be omitted. Occasionally, additional line breaks had to be added to make

12 Preface

things fit; these appear as “

”. When command syntax is discussed, words enclosed in angle brack-

ets (“⟨Word⟩”) denote “variables” that can assume different values; material in

brackets (“[

-f

⟨file⟩]”) is optional. Alternatives are separated using a vertical bar

(“

-a

|

-b

”).

Important concepts are emphasized using “marginal notes” so they can be eas-Important concepts

ily located; definitions of important terms appear in bold type in the text as well

definitions as in the margin.

References to the literature and to interesting web pages appear as “[GPL91]”

in the text and are cross-referenced in detail at the end of each chapter.

We endeavour to provide courseware that is as up-to-date, complete and error-

free as possible. In spite of this, problems or inaccuracies may creep in. If you

notice something that you think could be improved, please do let us know, e.g.,

by sending e-mail to

info@tuxcademy.org

(For simplicity, please quote the title of the courseware package, the revision ID

on the back of the title page and the page number(s) in question.) Thank you very

much!

LPIC-1 Certification

These training materials are part of a recommended curriculum for LPIC-1 prepa-

ration. Refer to Appendix B for further information.

$ echo tux

tux

$ ls

hallo.c

hallo.o

$ /bin/su -

Password:

1

System Logging

Contents

1.1 The Problem . . . . . . . . . . . . . . . . . . . . . 14

1.2 The Syslog Daemon . . . . . . . . . . . . . . . . . . . 14

1.3 Log Files . . . . . . . . . . . . . . . . . . . . . . . 17

1.4 Kernel Logging . . . . . . . . . . . . . . . . . . . . 18

1.5 Extended Possibilities: Rsyslog . . . . . . . . . . . . . . . 18

1.6 The “next generation”: Syslog-NG. . . . . . . . . . . . . . 22

1.7 The

logrotate

Program..................26

Goals

• Knowing the syslog daemon and how to configure it

• Being able to manage log file using

logrotate

• Understanding how the Linux kernel handles log messages

Prerequisites

• Basic knowledge of the components of a Linux system

• Handling configuration files

adm2-syslog.tex

(

0cd20ee1646f650c

)

14 1 System Logging

1.1 The Problem

Application programs need to tell their users something now and then. The com-

pletion of a task or an error situation or warning must be reported in a suitable

manner. Text-oriented programs output appropriate messages on their “termi-

nal”; GUI-based programs might use “alert boxes” or status lines whose content

changes.

The operating system kernel and the system and network services running in

the background, however, are not connected to user terminals. If such a process

wants to output a message, it might write it to the system console’s screen; on X11,

such messages might show up in the

xconsole

window.

In multi-user mode, writing a system message to the system console only is

not sufficient. Firstly, it is not clear that the message will actually be read by

root

,

secondly, these screen messages cannot be saved and may easily get lost.

1.2 The Syslog Daemon

The solution of this problem consists of the syslog daemon or

syslogd

. Instead of

outputting a message directly, system messages with a specific meaning can be

output using the

syslog()

function, which is part of the Linux C runtime library.

Such messages are accepted by

syslogd

via the local socket

/dev/log

.

BKernel messages are really handled by a different program called

klogd

. This

program preprocesses the messages and usually passes them along to

sys-

logd

. See section 1.4.

syslogd

proves very useful when debugging. It logs the different system messageslog

and is—as its name suggests—a daemon program. The

syslogd

program is usually

started via an init script while the system is booted. When it receives messages, it

can write them to a file or sends them on across the network to another computer

which manages a centralised log.

BThe common distributions (Debian GNU/Linux, Ubuntu, Red Hat Enter-

prise Linux, Fedora, openSUSE, …) have all been using, for various lengths

of time, a package called “Rsyslog”, which is a more modern implementa-

tion of a

syslogd

with more room for configuration. The additional capabil-

ities are, however, not essential for getting started and/or passing the LPI

exam. If you skip the first part of the Rsyslog configuration file, the remain-

der corresponds, to a very large extent, to what is discussed in this chapter.

There is more about Rsyslog in section 1.5.

Instead of

syslogd

, certain versions of the Novell/SUSE distributions, in par-

ticular the SUSE Linux Enterprise Server, use the Syslog-NG package in-

stead of

syslogd

. This is configured in a substantially different manner. For

the LPIC-1 exam, you need to know that Syslog-NG exists and roughly what

it does; see section 1.6.

The administrator decides what to do with individual messages. The configu-

ration file

/etc/syslog.conf

specifies which messages go where.

/etc/syslog.conf

BBy default, Rsyslog uses

/etc/rsyslog.conf

as its configuration file. This is

largely compatible to what

syslogd

would use. Simply ignore all lines start-

ing with a dollar sign (

$

).

The configuration file consists of two columns and might look like this:

kern.warn;*.err;authpriv.none /dev/tty10

kern.warn;*.err;authpriv.none |/dev/xconsole

*.emerg *

1.2 The Syslog Daemon 15

Table 1.1:

syslogd

facilities

Facility Meaning

authpriv

Confidential security subsystem messages

cron

Messages from

cron

and

at

daemon

Messages from daemon programs with no more specific facility

ftp

FTP daemon messages

kern

System kernel messages

lpr

Printer subsystem messages

mail

Mail subsystem messages

news

Usenet news subsystem messages

syslog syslogd

messages

user

Messages about users

uucp

Messages from the UUCP subsystem

local

𝑟(0≤𝑟≤7) Freely usable for local messages

Table 1.2:

syslogd

priorities (with ascending urgency)

Priority Meaning

none

No priority in the proper sense—serves to exclude all messages from

a certain facility

debug

Message about internal program states when debugging

info

Logging of normal system operations

notice

Documentation of particularly noteworthy situations during normal

system operations

warning

(or

warn

) Warnings about non-serious occurrences which are not se-

rious but still no longer part of normal operations

err

Error messages of all kinds

crit

Critical error messages (the dividing line between this and

err

is not

strictly defined)

alert

“Alarming” messages requiring immediate attention

emerg

Final message before a system crash

*.=warn;*.=err -/var/log/warn

*.crit /var/log/warn

*.*;mail.none;news.none -/var/log/messages

The first column of each line determines which messages will be selected, and the

second line says where these messages go. The first column’s format is

⟨facility⟩

.

⟨priority⟩[

;

⟨facility⟩

.

⟨priority⟩]

…

where the ⟨facility⟩denotes the system program or component giving rise to the facilities

message. This could be the mail server, the kernel itself or the programs managing

access control to the system. Table 1.1 shows the valid facilities. If you specify an

asterisk (“

*

”) in place of a facility, this serves as placeholder for any facility. It

is not easily possible to define additional facilities; the “local” facilities

local0

to

local7

should, however, suffice for most purposes.

The ⟨priority⟩specifies how serious the message is. The valid priorities are priorities

summarised in Table 1.2.

BWho gets to determine what facility or priority is attached to a message?

The solution is simple: Whoever uses the

syslog()

function, namely the de-

veloper of the program in question, must assign a facility and priority to

their code’s messages. Many programs allow the administrator to at least

redefine the message facility.

16 1 System Logging

A selection criterion of the form

mail.info

means “all messages of the mail sub-selection criteria

system with a priority of

info

and above”. If you just want to capture messages

of a single priority, you can do this using a criterion such as

mail.=info

. The as-

terisk (“

*

”) stands for any priority (you could also specify “

debug

”). A preceding

!

implies logical negation:

mail.!info

deselects messages from the mail subsystem

at a priority of

info

and above; this makes most sense in combinations such as

mail.*;mail.!err

, to select certain messages of low priority.

!

and

=

may be com-

bined;

mail.!=info

deselects (exactly) those messages from the mail subsystem with

priority

info

.

You may also specify multiple facilites with the same priority like

mail,news.info

;Multiple facilities—same priority

this expression selects messages of priority

info

and above that belong to the

mail

or

news

facilities.

Now for the right-hand column, the messages’ targets. Log messages can beactions

handled in different ways:

• They can be written to a file. The file name must be specified as an absolute

path. If there is a

-

in front of the path, then unlike normal

syslogd

oper-

ation, the file will not immediately be written to on disk. This means that

in case of a system crash you might lose pending log messages—for fairly

unimportant messages such as those of priority

notice

and below, or for mes-

sages from “chatty” facilities such as mail and news, this may not really be

a problem.

The file name may also refer to a device file (e.g.,

/dev/tty10

in the example

above).

• Log messages can be written to a named pipe (FIFO). The FIFO name must

be given as an absolute path with a preceding “

|

”. One such FIFO is

/dev/

xconsole

.

• They can be passed across the network to another

syslogd

. This is specified

as the name or IP address of the target system with a preceding

@

character.

This is especially useful if a critical system state occurs that renders the local

log file inaccessible; to deprive malicious crackers from a way to hide their

traces; or to collect the log messages of all hosts in a network on a single

computer and process them there.

On the target host, the

syslogd

must have been started using the

-r

(“remote”)

option in order to accept forwarded messages. How to do that depends on

your Linux distribution.

• They can be sent directly to users. The user names in question must be given

as a comma-separated list. The message will be displayed on the listed

users’ terminals if they are logged in when the message arrives.

• They can be sent to all logged-in users by specifying an asterisk (“

*

”) in place

of a login name.

As a rule, after installation your system already contains a running

syslogd

andChanging configuration

a fairly usable

/etc/syslog.conf

. If you want to log more messages, for example

because specific problems are occurring, you should edit the

syslog.conf

file and

then send

syslogd

a

SIGHUP

signal to get it to re-read its configuration file.

BYou can test the

syslogd

mechanism using the

logger

program. An invocation

of the form

$logger -p local0.err -t TEST "Hello World"

produces a log message of the form

Aug 7 18:54:34 red TEST: Hello World

Most modern programming languages make it possible to access the

sys-

log()

function.

1.3 Log Files 17

Exercises

C1.1 [2] Find out when somebody last assumed

root

’s identity using

su

.

C1.2 [!2] Reconfigure

syslogd

such that, in addition to the existing configura-

tion, it writes all (!) messages to a new file called

/var/log/test

. Test your

answer.

C1.3 [3] (Requires two computers and a working network connection.) Recon-

figure

syslogd

on the first computer such that it accepts log messages from

the network. Reconfigure

syslogd

on the second computer such that it sends

messages from facility

local0

to the first computer. Test the configuration.

C1.4 [2] How can you implement a logging mechanism that is safe from at-

tackers that assume control of the logging computer? (An attacker can al-

ways pretend further messages from being logged. We want to ensure that

the attacker cannot change or delete messages that have already been writ-

ten.)

1.3 Log Files

Log files are generally created below

/var/log

. The specific file names vary—refer

/var/log

to the

syslog.conf

file if you’re in doubt. Here are some examples:

Debian GU/Linux collects all messages except those to do with authentica-

tion in the

/var/log/syslog

file. There are separate log files for the

auth

,

daemon

,

kern

,

lpr

,

mail

,

user

, and

uucp

facilities, predictably called

auth.log

etc. On top

of that, the mail system uses files called

mail.info

,

mail.warn

, and

mail.err

,

which respectively contain only those messages with priority

info

etc. (and

above). Debugging messages from all facilities except for

authpriv

,

news

, and

mail

end up in

/var/log/debug

, and messages of priority

info

,

notice

, and

warn

from all facilities except those just mentioned as well as

cron

and

daemon

in

/var/log/messages

.

The defaults on Ubuntu correspond to those on Debian GNU/Linux.

On Red Hat distributions, all messages with a priority of

info

or above,

except those from

authpriv

and

cron

, are written to

/var/log/messages

, while

messages from

authpriv

are written to

/var/log/secure

and those from

cron

to

/var/log/cron

. All messages from the mail system end up in

/var/log/maillog

.

OpenSUSE logs all messages except those from

iptables

and the

news

and

mail

facilities to

/var/log/messages

. Messages from

iptables

go to

/var/log/

firewall

. Messages that are not from

iptables

and have priority

warn

,

err

,

or

crit

are also written to

/var/log/warn

. Furthermore, there are the

/var/

log/localmessages

file for messages from the

local*

facilities, the

/var/log/

NetworkManager

file for messages from the

NetworkManager

program, and the

/var/log/acpid

file for messages from the ACPI daemon. The mail sys-

tem writes its log both to

/var/log/mail

(all messages) and to the files

mail.info

,

mail.warn

, and

mail.err

(the latter for the priorities

err

and

crit

),

while the news system writes its log to

news/news.notice

,

news/news.err

, and

news/news.crit

(according to the priority)—there is no overview log file for

news. (If you think this is inconsistent and confusing, you are not alone.)

ASome log files contain messages concerninig users’ privacy and should thus

only be readable by

root

. In most cases, the distributions tend to err towards

caution and restrict the access rights to all log files.

18 1 System Logging

You can peruse the log files created by

syslogd

using

less

;

tail

lends itself toInspecting log files

long files (possibly using the

-f

option). There are also special tools for reading

log files, the most popular of which include

logsurfer

and

xlogmaster

.

The messages written by

syslogd

normally contain the date and time, the hostmessages

name, a hint about the process or component that created the message, and the

message itself. Typical messages might look like this:

Mar 31 09:56:09 red modprobe: modprobe: Can't locate ...

Mar 31 11:10:08 red su: (to root) user1 on /dev/pts/2

Mar 31 11:10:08 red su: pam-unix2: session started for ...

You can remove an overly large log file using

rm

or save it first by renaming it

with an extension like

.old

. A new log file will be created when

syslogd

is next

restarted. However, there are more convenient methods.

1.4 Kernel Logging

The Linux kernel does not send its log messages to

syslogd

but puts them into

an internal “ring buffer”. They can be read from there in various ways—via a

specialised system call, or the

/proc/kmsg

“file”. Traditionally, a program called

klogd

is used to read

/proc/kmsg

and pass the messages on to

syslogd

.

BRsyslog gets by without a separate

klogd

program, because it takes care of

kernel log messages directly by itself. Hence, if you can’t find a

klogd

on your

system, this may very likely be because it is using rsyslog.

During system startup,

syslogd

and possibly

klogd

are not immediately available—

they must be started as programs and thus cannot handle the kernel’s start mes-

sages directly. The

dmesg

command makes it possible to access the kernel log buffer

retroactively and look at the system start log. With a command such as

#dmesg >boot.msg

you can write these messages to a file and send it to a kernel developer.

BUsing the

dmesg

command you can also delete the kernel ring buffer (

-c

op-

tion) and set a priority for direct notifications: messages meeting or exceed-

ing this priority will be sent to the console immediately (

-n

option). Kernel

messages have priorities from 0to 7corresponding to the

syslogd

priorities

from

emerg

down to

debug

. The command

#dmesg -n 1

for example causes only

emerg

messages to be written to the console directly.

All messages will be written to

/proc/kmsg

in every case—here it is the job of

postprocessing software such as

syslogd

to suppress unwanted messages.

Exercises

C1.5 [2] What does

dmesg

output tell you about the hardware in your com-

puter?

1.5 Extended Possibilities: Rsyslog

Rsyslog by Rainer Gerhards has replaced the traditional BSD

syslogd

on most com-

mon Linux distributions. Besides greater efficiency, rsyslog’s goal is supporting

various sources and sinks for log messages. For example, it writes messages not

just to text files and terminals, but also a wide selection of databases.

1.5 Extended Possibilities: Rsyslog 19

BAccording to its own web site, “rsyslog” stands for “rocket-fast syslog”.

Of course one should not overestimate the value of that kind of self-

aggrandisement, but in this case the self-praise is not entirely unwarranted.

The basic ideas behind rsyslog are basically as follows:

• “Sources” pass messages on to “rulesets”. There is one standard built-in

ruleset (

RSYSLOG_DefaultRuleset

), but you as the user get to define others.

• Every ruleset may contain arbitrarily many rules (even none at all, even

though that does not make a great deal of sense).

• A rule consists of a “filter” and an “action list”. Filters make yes-no deci-

sions about whether the corresponding action list will be executed.

• For each message, all the rules in the ruleset will be executed in order from

the first to the last (and no others). All rules will always be executed, no

matter how the filter decisions go, although there is a “stop processing”

action.

• An action list may contain many actions (at least one). Within an action

list, no further filters are allowed. The actions determine what happens to

matching log messages.

• The exact appearance of log messages in the output may be controlled

through “templates”.

Rsyslog’s configuration can be found in the

/etc/rsyslog.conf

file. In this file you

may use three different styles of configuration setting in parallel:

• The traditional

/etc/syslog.conf

syntax (“sysklogd”).

• An obsolete rsyslog syntax (“legacy rsyslog”). You can recognise this by the

commands that start with dollar signs (

$

).

• The current rsyslog syntax (“RainerScript”). This is best suited for complex

situations.

The first two flavours are line-based. In the current syntax, line breaks are irrele-

vant.

For very simple applications you can still—and should!—use the sysklogd syn-

tax (as discussed in the previous sections). If you want to set configuration pa-

rameters or express complex control flows, RainerScript is more appropriate. You

should avoid the obsolete rsyslog syntax (even if various Linux distributions don’t

do this in their default configurations), except that various features of rsyslog are

only accessible using that syntax.

BAs usual, empty lines and comment lines will be ignored. Comment lines

include both lines (and parts of lines) that start with a

#

(the comment then

stops at the end of the line) and C-style comments that reach from a

/*

,

disregarding line breaks, until a

*/

.

BC-style comments may not be nested1, but

#

comments may occur inside C-

style comments. That makes C-style comments particularly useful to “com-

ment out” large swathes of a configuration file in order to make it invisible

to rsyslog.

Rsyslog offers various features that surpass those of BSD

syslogd

. For example,

you can use extended filter expressions for messages:

:msg, contains, "FOO" /var/log/foo.log

1You don’t get to do that in C, either, so it shouldn’t be a major nuisance.

20 1 System Logging

Extended filter expressions always consist of a colon at the left margin, a “prop-

erty” that rsyslog takes from the message, a filter operator (here,

contains

), and a

search term. In our example, all log messages whose text contains the character

sequence

FOO

will be written to the

/var/log/foo.log

file.

BApart from

msg

(the log message proper), the “properties” you may use in-

clude, for example,

hostname

(the name of the computer sending the mes-

sage),

fromhost

(the name of the computer that forwarded the message to

rsyslog),

pri

(the category and priority of the message as an undecoded

number),

pri-text

(the category and priority as a text string, with the num-

ber appended, as in “

local0.err<133>

”),

syslogfacility

and

syslogseverity

as

well as

syslogfacility-text

and

syslogseverity-text

for direct access to the cat-

egory and priority,

timegenerated

(when the message was received) or

input-

name

(the rsyslog module name of the source of the message). There are

various others; look at rsyslog’s documentation.

BThe allowable comparison operators are

contains

,

isequal

,

startswith

,

regex

,

and

eregex

. These speak for themselves, except for the latter two—

regex

con-

siders its parameter as a simple and

eregex

as an “extended” regular expres-

sion according to POSIX. All comparison operators take upper and lower

case into account.

AThe

startswith

comparison is useful because it is considerably more efficient

than a regular expression that is anchored to the start of the message (as

long as you’re looking for a constant string, anyway). You should, however,

be careful, because what you consider the start of the message and what

rsyslog thinks of that can be quite different. If rsyslog receives a message

via the syslog service, this will, for example, look like

<131>Jul 22 14:25:50 root: error found

As far as rsyslog is concerned,

msg

does not start (as one might naively as-

sume) at the

e

of

error

, but with the space character in front of it. So if you

are looking for messages that start with

error

, you should say

:msg, startswith, " error" /var/log/error.log

BThere is a nice addition on the “action side” of simple rules: With traditional

syslogd

, you have already seen that an entry like

local0.* @red.example.com

will forward log messages to a remote host via the (UDP-based) syslog pro-

tocol. With rsyslog, you may also write

local0.* @@red.example.com

to transmit log messages via TCP. This is potentially more reliable, especially

if firewalls are involved.

BAt the other end of the TCP connection, of course, there must be a suitably

configured rsyslog listening for messages. You can ensure this, for example,

via

module(load="imtcp" MaxSessions="500")

input(type="imtcp" port="514")

In the obsolete syntax,

1.5 Extended Possibilities: Rsyslog 21

$ModLoad imtcp

$InputTCPMaxSessions 500

$InputTCPServerRun 514

does the same thing.

ADo consider that only the UDP port 514 is officially reserved for the syslog

protocol. The TCP port 514 is really used for a different purpose2. You can

specify a different port just in case:

local0.* @@red.example.com:10514

(and that works for UDP, too, if necessary). The changes required on the

server side will be easy for you to figure out on your own.

The next level of complexity are filters based on expressions that may contain

arbitrary Boolean, arithmetic, or string operations. These always start with an

if

at the very left of a new line:

if $syslogfacility-text == "local0" and $msg startswith " FOO"

and ($msg contains "BAR" or $msg contains "BAZ")

then /var/log/foo.log

(in your file this should all be on one line). With this rule, messages of category

local0

will be written to the

/var/log/foo.log

file as long as they start with

FOO

and

also contain either

BAR

or

BAZ

(or both). (Watch for the dollar signs at the start of

the property names.)

Rsyslog supports a large number of modules that determine what should hap-

pen to log messages. You might, for example, forward important messages by

e-mail. To do so, you might put something like

module(load="ommail")

template(name="mailBody" type="string" string="ALERT\\r\\n%msg%")

if $msg contains "disk error" then {

action(type="ommail" server="mail.example.com" port="25"

mailfrom="rsyslog@example.com" mailto="admins@example.com"

subject.text="disk error detected"

body.enable="on" template="mailBody"

action.execonlyonceeveryinterval="3600")

}

into your

/etc/rsyslog.conf

.

BIf you have an older version of rsyslog (before 8.5.0) you will need to use the

obsolete syntax to configure the

ommail

module. That might, for example,

look like

$ModLoad ommail

$ActionMailSMTPServer mail.example.com

$ActionMailFrom rsyslog@example.com

$ActionMailTo admins@example.com

$template mailSubject,"disk error detected"

$template mailBody,"ALERT\\r\\n%msg%"

$ActionMailSubject mailSubject

$ActionExecOnlyOnceEveryInterval 3600

if $msg contains "disk error" then :ommail:;mailBody

$ActionExecOnlyOnceEveryInterval 0q

2… even though nobody nowadays is still interested in the remote-shell service. Nobody reason-

able, anyway.

22 1 System Logging

BRsyslog’s SMTP implementation is fairly primitive, since it supports neither

encryption nor authentication. This means that the mail server you specify

in the rsyslog configuration must be able to accept mail from rsyslog even

without encryption or authentication.

By the way, rsyslog can handle Linux kernel log messages directly. You simply

need to enable the

imklog

input module:

module(load="imklog")

or (obsolete syntax)

$ModLoad imklog

A separate

klogd

process is not necessary.

Detailed information on rsyslog is available, for example, in the online docu-

mentation [rsyslog].

Exercises

C1.6 [!3] (If your distribution doesn’t use rsyslog already.) Install rsyslog and

create a configuration that is as close to your existing

syslogd

configuration

as possible. Test it with (for example)

logger

. Where do you see room for

improvement?

C1.7 [2] PAM, the login and authentication system, logs sign-ons and sign-

offs in the following format:

kdm: :0[5244]: (pam_unix) session opened for user hugo by (uid=0)

kdm: :0[5244]: (pam_unix) session closed for user hugo

Configure rsyslog such that whenever a particular user (e. g. you) logs on

or off, a message is displayed on the system administrator’s (

root

’s) terminal

if they are logged on. (Hint: PAM messages appear in the

authpriv

category.)

C1.8 [3] (Cooperate with another class member if necessary.) Configure rsys-

log such that all log messages from one computer are passed to another

computer by means of a TCP connection. Test this connection using

logger

.

1.6 The “next generation”: Syslog-NG

Syslog-NG (“NG” for “new generation”) is a compatible, but extended reim-

plementation of a syslog daemon by Balazs Scheidler. The main advantages ofmain advantages

Syslog-NG compared to the traditional

syslogd

include:

• Filtering of messages based on their content (not just categories and priori-

ties)

• Chaining of several filters is possible

• A more sophisticated input/output system, including forwarding by TCP

and to subprocesses

The program itself is called

syslog-ng

.

BFor syslog clients there is no difference: You can replace a

syslogd

with

Syslog-NG without problems.

You can find information about Syslog-NG in its manual pages as well as on

[syslog-ng]. This includes documentation as well as a very useful FAQ collection.

1.6 The “next generation”: Syslog-NG 23

Configuration file Syslog-NG reads its configuration from a file, normally

/etc/

syslog-ng/syslog-ng.conf

. Unlike

syslogd

, Syslog-NG distinguishes various “entry entry types

types” in its configuration file.

Global options These settings apply to all message sources or the Syslog-NG

daemon itself.

Message sources Sylog-NG can read messages in various ways: from Unix-

domain sockets or UDP like

syslogd

, but also, for example, from files, FIFOs,

or TCP sockets. Every message source is assigned a name.

Filters Filters are Boolean expressions based on internal functions that can, for

example, refer to the origin, category, priority, or textual content of a log

message. Filters are also named.

Message sinks Syslog-NG includes all logging methods of

syslogd

and then some.

Log paths A “log path” connects one or several message sources, filters, and

sinks: If messages arrive from the sources and pass the filter (or filters),

they will be forwarded to the specified sink(s). At the end of the day, the

configuration file consists of a number of such log paths.

Options You can specify various “global” options that control Syslog-NG’s gen-

eral behaviour or determine default values for individual message sources or

sinks (specific options for the sources or sinks take priority). A complete list is

part of the Syslog-NG documentation. The general options include various set-

tings for handling DNS and the forwarding or rewriting of messages’ sender host

names.

BIf Syslog-NG on host 𝐴receives a message from host 𝐵, it checks the

keep_hostnames()

option. If its value is

yes

,𝐵will be kept as the host name for

the log. If not, the outcome depends on the

chain_hostnames()

option; if this

is

no

, then 𝐴will be logged as the host name, if it is

yes

, then Syslog-NG will

log 𝐵

/

𝐴. This is particularly important if the log is then forwarded to yet

another host.

Message Sources In Syslog-NG, message sources are defined using the

source

keyword. A message source collects one or more “drivers”. To accomplish the

same as a “normal”

syslogd

, you would include the line

source src { unix-stream("/dev/log"); internal(); };

in your configuration; this tells Syslog-NG to listen to the Unix-domain socket

/dev/log

.

internal()

refers to messages that Syslog-NG creates by itself.

BA Syslog-NG message source corresponding to the

-r

option of

syslogd

might

look like this:

source s_remote { udp(ip(0.0.0.0) port(514)); };

Since that is the default setting,

source s_remote { udp(); };

would also do.

BWith

ip()

, you can let Syslog-NG listen on specific local IP addresses only.

With

syslogd

, this isn’t possible.

The following source specification lets Syslog-NG replace the

klogd

program:

source kmsg { file("/proc/kmsg" log_prefix("kernel: ")); };

BAll message sources support another parameter,

log_msg_size()

, which spec-

ifies the maximum message length in bytes.

24 1 System Logging

Table 1.3: Filtering functions for Syslog-NG

Syntax Description

facility(

⟨category⟩[

,

⟨category⟩

…

]

)

Matches messages with one of the listed

categories

level(

⟨priority⟩[

,

⟨priority⟩

…

]

)

Matches messages with one of the listed

priorities

priority(

⟨priority⟩[

,

⟨priority⟩

…

]

)

Same as

level()

program(

⟨regex⟩

)

Matches messages where the name of the

sending program matches ⟨regex⟩

host(

⟨regex⟩

)

Matches messages whose sending host

matches ⟨regex⟩

match(

⟨regex⟩

)

Matches messages which match the ⟨regex⟩

themselves

filter(

⟨name⟩

)

Invokes another filtering rule and returns

its value

netmask(

⟨IP address⟩

/

⟨netmask⟩

)

Checks whether the IP address is in the

given network

Filters Filters are used to sift through log messages or distribute them to various

sinks. They rely on internal functions that consider specific aspects of messages;

these functions can be joined using the logical operators,

and

,

or

, and

not

. A list of

possible functions is shown in table ??.

You might, for example, define a filter that matches all messages from host

green

containing the text

error

:

filter f_green { host("green") and match("error"); };

BWith the

level()

(or

priority()

function, you can specify either one or more

priorities separated by commas, or else a range of priorities like “

warn ..

emerg

”.

Message Sinks Like sources, sinks consist of various “drivers” for logging meth-

ods. For example, you can write messages to a file:

destination d_file { file("/var/log/messages"); };

You can also specify a “template” that describes in which format the message

should be written to the sink in question. When doing so, you can refer to

“macros” that make various parts of the message accessible. For instance:

destination d_file {

file("/var/log/$YEAR.$MONTH.$DAY/messages"

template("$HOUR:$MIN:$SEC $TZ $HOST [$LEVEL] $MSG\n")

template_escape(no)

create_dirs(yes)

);

};

The

$YEAR

,

$MONTH

, etc. macros will be replaced by the obvious values.

$TZ

is the cur-

rent time zone,

$LEVEL

the message priority, and

$MSG

the messaeg itself (including

the sender’s process ID). A complete list of macros is part of Syslog-NG’s docu-

mentation.

BThe

template_escape()

parameter controls whether quotes (

'

and

"

) should

be “escaped” in the output. This is important if you want to feed the log

messages to, say, an SQL server.

1.6 The “next generation”: Syslog-NG 25

Unlike

syslogd

, Syslog-NG allows forwarding messages using TCP. This is not

just more convenient when firewalls are involved, but also ensures that no log

messages can get lost (which might happen with UDP). You could define a TCP

forwarding sink like this:

destination d_tcp { tcp("10.11.12.13" port(514); localport(514)); };

BAlso very useful is forwarding messages to programs using

program()

.

Syslog-NG starts the program when it is started itself, and keeps it run-

ning until itself is stopped or it receives a

SIGHUP

. This is not just to increase

efficiency, but serves as a precaution against denial-of-service attacks—if

a new process is started for every new message, an attacker could shut off

logging by sending large amounts of matching log messages. (Other mes-

sages that would point to these shenanigans might then be dropped to the

floor.)

Log paths Log paths serve to bring sources, filters, and sinks together and to ac-

tually evaluate messages. They always start with the

log

keyword. Here are a few

examples based on rules you know already from our

/etc/syslog.conf

discussion:

# Prerequisites

source s_all { internal(); unix-stream("/dev/log"); };

filter f_auth { facility(auth, authpriv); };

destination df_auth { file("/var/log/auth.log"); };

# auth,authpriv.* /var/log/auth.log

log {

source(s_all);

filter(f_auth);

destination(df_auth);

};

This rule causes all messages to do with authentication to be written to the

/var/

log/auth.log

file. Of course, with

syslogd

, this can be done in one line …

Here is a somewhat more complex example:

# kern.warn;*.err;authpriv.none /dev/tty10

filter f_nearly_all {

(facility(kern) and priority(warn .. emerg))

or (not facility(authpriv,kern));

};

destination df_tty { file("/dev/tty10"); };

log {

source(s_all);

filter(f_nearly_all);

destination(df_tty);

};

Here, too,

syslogd

’s version is a little more compact, but on the other hand this

description might be easier to follow.

BEvery message passes through all log paths, and will be logged by all match-

ing ones (this behaviour equals that of

syslogd

). If you want a message to not

be further considered after it has passed a particular log path, you can add

the

flags(final)

option to that path.

B

flags(final)

does not mean that the message is logged just once; it might

have been logged by other paths before the path in question.

26 1 System Logging

BWith

flags(fallback)

, you can declare a path to be the “default path”. This

path will only be considered for log messages that did not match any paths

that were not marked

flags(fallback)

.

Exercises

C1.9 [!3] Install Syslog-NG and create a configuration that is as close to your

existing

syslogd

configuration as possible. Test it with (for example)

logger

.

Where do you see room for improvement?

C1.10 [2] PAM, the login and authentication system, logs sign-ons and sign-

offs in the following format:

kdm: :0[5244]: (pam_unix) session opened for user hugo by (uid=0)

kdm: :0[5244]: (pam_unix) session closed for user hugo

Configure Syslog-NG such that whenever a particular user (e. g. you) logs

on or off, a message is displayed on the system administrator’s (

root

’s) ter-

minal if they are logged on. (Hint: PAM messages appear in the

authpriv

category.)

C1.11 [3] (Cooperate with another class member if necessary.) Configure

rsyslog such that all log messages from one computer are passed to another

computer by means of a TCP connection. Test this connection using

logger

.

Experiment with different settings for

keep_hostnames()

and

chain_hostnames()

.

1.7 The

logrotate

Program

Depending on the number of users and the number and type of running services,

the log files can grow fairly large fairly quickly. To keep the system from inun-

dation by garbage, you should on the one hand try to put the relevant directories

(e. g.,

/var/log

or

/var

) on their own partitions. On the other hand there is software

which checks the log files periodically according to various criteria such as the

size, truncates them and removes or archives old log files. This process is called

“rotation”, and one such program is

logrotate

.

logrotate

is not a daemon, but will usually be executed once a day (or so) using

cron

—or a similar service.

B

logrotate

refuses to modify a log file more than once a day, except if the

decision depend on the size of the log file, you’re using the

hourly

criterion,

or the

--force

option (

-f

for short) was specified with

logrotate

.

According to convention,

logrotate

is configured using the

/etc/logrotate.conf/etc/logrotate.conf

file and the files within the

/etc/logrotate.d

directory. The

/etc/logrotate.conf

file

/etc/logrotate.d

sets up general parameters, which can be overwritten by the files in

/etc/logrotate.

d

if necessary. In

/etc/logrotate.conf

, there is in particular the “

include /etc/logro-

tate.d

” parameter, which causes the files from that directory to be read in that

place as if they were part of the

/etc/logrotate.conf

file.

BIn principle,

logrotate

reads all the files named on the command line as con-

figuration files, and the content of files mentioned later overwrites that of

files mentioned earlier. The

/etc/logrotate.conf

thing is just a (reasonable)

convention which is put into action by means of a suitable invocation of

logrotate

in

/etc/cron.daily/logrotate

(or something equivalent).

1.7 The

logrotate

Program 27

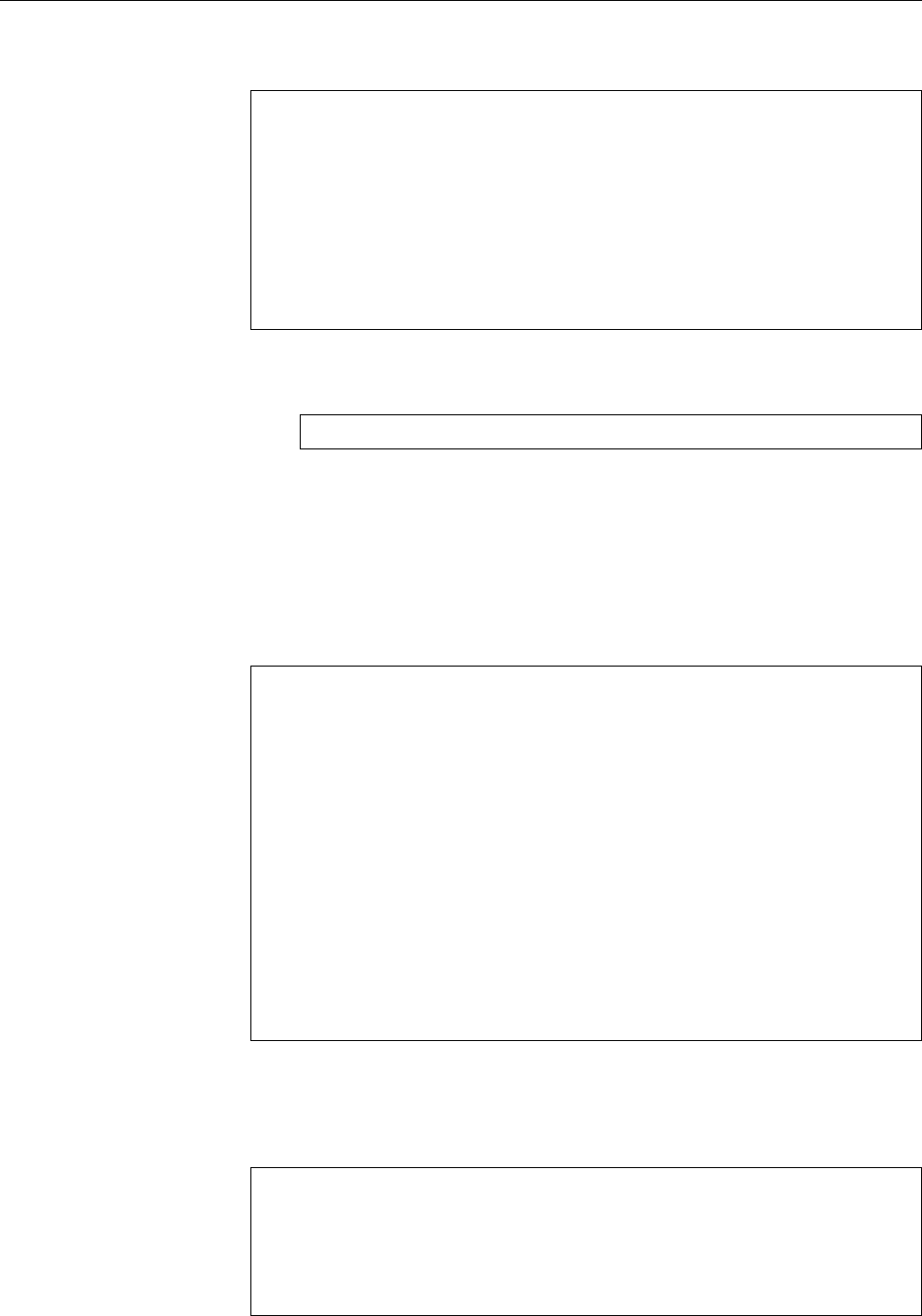

/var/log/syslog

{

rotate 7

daily

missingok

notifempty

delaycompress

compress

postrotate

invoke-rc.d rsyslog rotate >/dev/null

endscript

}

Figure 1.1: Example configuration for

logrotate

(Debian GNU/Linux 8.0)

BWe mention this here because it gives you the basic possibility to perform,

without undue hassle, separate

logrotate

runs for log files which aren’t part

of the regular configuration. If, for example, you have an extremely fast-

growing log file of, say, a popular web server, you can manage this using a

separate

logrotate

instance that runs more often than once a day.

logrotate

watches all files that it is told about by the aforementioned configu-

ration files, not just those created by

syslogd

. By way of example, figure 1.1 shows

an excerpt of a configuration file for rsyslog from Debian GNU/Linux 8.

The first line of the example specifies the files that this configuration applies

to (here,

/var/log/syslog

). You may enumerate several files or specify shell search

patterns. After that, inside curly braces, there is a block of directives that define

how

logrotate

should deal with the given files.

BTypically,

/etc/logrotate.conf

contains directives that are outside of a brace-

delimited block. These directives serve as defaults that apply to all log files

in the configuration, unless something more specific is given in their own

blocks of directives.

“

rotate 7

” means that at most seven old versions of each log file will be kept. old versions

When this maximum is reached, the oldest version of the log file will be deleted.

BIf you specify an address using

mail

, files will not be deleted but instead be

sent to the address in question.

B“

rotate 0

” deletes “rotated” log messages outright without keeping them at

all.

The rotated files are numbered in sequence, this means that if the current version

of the file is called

/var/log/syslog

, the immediately preceding version will be

/var/

log/syslog.1

, the version preceding that will be

/var/log/syslog.2

, and so on.

BYou may use the date instead of the sequential numbers. This means that

if today is July 20, 2015, and your

logrotate

run takes place daily in the

wee hours, the immediately preceding version of the file is not called

/var/

log/syslog.1

but

/var/log/syslog-20150720

, the version preceding that will be

called

/var/log/syslog-20150719

, and so on. To use this you must specify the

“

dateext

” directive.

BUsing “

dateformat

”, you can control exactly how the date-based file exten-

sion should look like. To do so, you need to specify a string that may con-

tain the

%Y

,

%m

,

%d

, and

%s

keys. These stand for the (four-digit) year, calendar

month, and calendar day (in each case two digits and, if necessary, with a

leading zero) and the seconds since 1st January 1970, 12:00 am UTC. As you

can surmise from the previous paragraph, the default is “

-%Y%m%d

”.

28 1 System Logging

BWhen you use

dateformat

, you should note that

logrotate

does a lexicographic

sort of file names when rotating in order to find out which file is the oldest.

This works with “

-%Y%m%d

”, but not with “

-%d%m%Y

”.

“

daily

” means that log files should be rotated daily. Together with “

rotate 7

”Time periods

this implies that you always have access to last week’s logs.

BThere are also

weekly

,

monthly

, and

yearly

. With

weekly

, the file will be rotated

when the current day of the week is earlier than the day of the week of the

last rotation, or more than one week has passed since the last rotation (in

the end, this means that rotation will take place on the first day of the week,

which according to US custom is the Sunday). With

monthly

, the file will be

rotated on the first

logrotate

run of the month (usually on the first of the

month). With

yearly

, rotation takes place on the first

logrotate

run of the

year. Theoretically,

hourly

rotates the log file every hour, but since

logrotate

is normally only run once a day, you will have to arrange for it to be run

frequently enough.

BAn alternative criterion is “

size

”. This will rotate a log file when a certain

size has been exceeded. The file size is given as a parameter—without a

unit, it will be taken to mean bytes, while the units

k

(or

K

),

M

, and

G

stand for

kibibytes (210 bytes), mebibytes (220 bytes), or gibibytes (230 bytes), respec-

tively.

B“

size

” and the time-based criteria are mutually exclusive. This means that

if you specify a “

size

” criterion, rotation will depend solely on file size, no

matter when the file was last rotated.

BFile size and time can be used together by means of the “

maxsize

” and

“

minsize

” criteria. With “

maxsize

”, you can specify a size which will cause

logrotate

to rotate the file even if the next official date has not been reached.

With “

minsize

”, the file will only be rotated at the specified point in time if

it has exceeded the given size (small files will be skipped).

“

missingok

” suppresses error messages if a log file could not be found. (The defaulterror messages

is “

nomissingok

”.) “

notifempty

” does not rotate a file if it is empty (the default here

is “

ifempty

”).

“

compress

” lets you specify that rotated versions of the log file should be com-

pressed.

BThis is by default done with

gzip

unless you request a different command

using “

compresscmd

”. Options for that command (which you would otherwise

pass on its command line) can be defined with “

compressoptions

”. The default

for

gzip

is “

-6

”.

The “

delaycompress

” directive ensures that a freshly rotated file is not compressed

immediately after the rotation but only on the next run. While usually the se-

quence of files would look like

/var/log/syslog /var/log/syslog.1.gz /var/log/syslog.2.gz …

“

delaycompress

” would get you the sequence

/var/log/syslog /var/log/syslog.1 /var/log/syslog.2.gz …

(in other words,

/var/log/syslog.1

remains uncompressed). You need this setting

if there is a chance that the logging program (like rsyslog) might append data

to the file after it has been renamed (rotated)—this can happen because rsyslog

keeps the logfile open, and renaming the file is irrelevant as far as writing to it is

concerned.

This implies that you need to notify rsyslog that there is a new log file. This is

what the

1.7 The

logrotate

Program 29

postrotate

invoke-rc.d rsyslog rotate >/dev/null

endscript

directive is for. The shell commands between “

postrotate

” and “

endscript

” are ex-

ecuted by

logrotate

whenever the log file has been rotated.

The command itself is basically irrelevant (the idea counts), but what hap-

pens in the end is that rsyslog’s init script will be invoked, and it will send

SIGHUP

to the program. Other distributions also have their ways and means.

BThe

SIGHUP

then causes rsyslog to reread its configuration file and close and

reopen all log files. Since

/var/log/syslog

was renamed earlier on, rsyslog

opens a new log file under that name.—At this point,

logrotate

could com-

press the

/var/log/syslog.1

file, but it has no way of knowing when rsyslog

is really done with the file. This is why this is postponed until the file gets

rotated again.

Between “

postrotate

” and “

endscript

” there may be several lines with commands.

logrotate

concatenates them all and passes them to the shell (

/bin/sh

) as a whole.

The commands is passed the name of the log file as a parameter, and that is avail-

able there in the customary fashion as “

$1

”.

BThe

postrotate

commands are executed once for every log file enumerated at

the start of the configuration block. This means that the commands will per-

haps be executed several times. You can use the “

sharedscripts

” directive to

ensure that the commands are executed at most once for all files that match

the search pattern (or not at all, if none of the files needed to be rotated).

You can use “

create

” to make sure that the log file is recreated immediately after

the rotation and before the

postrotate

commands are executed. This uses the name

of the old file. The file mode, owner, and group derive from the parameters to

create

; the three possibilities are

create 600 root adm

File mode, user, and group

create root adm

Just user and group

create

Nothing at all

Unspecified file properties are taken from the previous version of the file.

This is just a selection of the most important configuration parameters. Study

logrotate

(8) to see the full list.

Exercises

C1.12 [!1] Which system-wide defaults does

logrotate

establish in your distri-

bution?

C1.13 [C]onsult

/etc/logrotate.conf

(and possibly

logrotate

(8)).

C1.14 [3] Configure

logrotate

such that your new

/var/log/test

log file will be

rotated once it exceeds a length of 100 bytes. 10 rotated versions should be

kept, these older versions should be compressed and should use a name

containing the date of their creation. Test your configuration.

30 1 System Logging

Commands in this Chapter

klogd

Accepts kernel log messages

klogd

(8) 14, 18

logger

Adds entries to the system log files

logger

(1) 16

logrotate

Manages, truncates and “rotates” log files

logrotate

(8) 26

logsurfer

Searches the system log files for important events

www.cert.dfn.de/eng/logsurf/

17

syslogd

Handles system log messages

syslogd

(8) 14

tail

Displays a file’s end

tail

(1) 17

xconsole

Displays system log messages in an X window

xconsole

(1) 14

xlogmaster

X11-based system monitoring program

xlogmaster

(1),

www.gnu.org/software/xlogmaster/

17

Summary

• The

syslogd

daemon can accept log messages from various system compo-

nents, write them to files, or pass them on to users or other computers.

• Log messages may belong to diverse facilities and can have various priori-

ties.

• Messages can be sent to

syslogd

using the

logger

command.

• Log files are generally placed in the

/var/log

directory.

• Syslog-NG is a compatible, but extended, reimplementation of a syslog dae-

mon.

•

logrotate

can be used to manage and archive log files.

Bibliography

RFC3164 C. Lonvick. “The BSD syslog Protocol”, August 2001.

http://www.ietf.org/rfc/rfc3164.txt

rsyslog “Welcome to Rsyslog”.

http://www.rsyslog.com/doc/v8-stable/index.html

syslog-ng “syslog-ng – Log Management Software”.

http://www.balabit.com/products/syslog_ng/

$ echo tux

tux

$ ls

hallo.c

hallo.o

$ /bin/su -

Password:

2

System Logging with Systemd and

“The Journal”

Contents

2.1 Fundamentals . . . . . . . . . . . . . . . . . . . . . 32

2.2 Systemd and journald . . . . . . . . . . . . . . . . . . 33

2.3 Log Inspection . . . . . . . . . . . . . . . . . . . . . 35

Goals

• Understanding the fundamentals of journald

• Being able to configure journald

• Being able to issue simple journal queries

• Understanding how journald handles log files

Prerequisites

• Basic knowledge of Linux system components

• Ability to handle configuration files

• Knowledge of the traditional system log service (chapter 1)

• Knowledge about systemd

adm2-journald.tex

(

0cd20ee1646f650c

)

32 2 System Logging with Systemd and “The Journal”

2.1 Fundamentals

Systemd is a far-reaching renewal of the software that ensures the basic operation

of a Linux computer. In a stricter sense, systemd is about starting and tracking

services and managing resources. Systemd also contains an approach to system

logging that is markedly different from the traditional syslogd method, the “jour-

nal”, and the software components necessary to implement it.

While in the traditional approach the syslog daemon accepts log messages on

UDP port 514 or the

/dev/log

socket, and (typically) writes them to text files (or

forwards them to other hosts where they are written to text files), in the systemd

world background services can simply write log messages to their standard error

output channel and systemd will arrange for them to be passed to the logging

service1. With systemd, log files are not text files (where every message is possibly

written to several files), but messages are written to a (binary) database that can

then be queried according to diverse criteria.