NVIDIA CUDA Programming Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 173 [warning: Documents this large are best viewed by clicking the View PDF Link!]

Version 4.2

4/16/2012

NVIDIA CUDA™

NVIDIA CUDA C

Programming Guide

ii CUDA C Programming Guide Version 4.2

Changes from Version 4.1

Updated Chapter 4, Chapter 5, and Appendix F to include information on

devices of compute capability 3.0.

Replaced each reference to “processor core” with “multiprocessor” in

Section 1.3.

Replaced Table A-1 by a reference to http://developer.nvidia.com/cuda-gpus.

Added new Section B.13 on the warp shuffle functions.

CUDA C Programming Guide Version 4.2 iii

Table of Contents

Chapter 1. Introduction ................................................................................... 1

1.1 From Graphics Processing to General-Purpose Parallel Computing ................... 1

1.2 CUDA™: a General-Purpose Parallel Computing Architecture .......................... 3

1.3 A Scalable Programming Model .................................................................... 4

1.4 Document’s Structure ................................................................................. 6

Chapter 2. Programming Model ....................................................................... 7

2.1 Kernels ...................................................................................................... 7

2.2 Thread Hierarchy ........................................................................................ 8

2.3 Memory Hierarchy .................................................................................... 10

2.4 Heterogeneous Programming .................................................................... 11

2.5 Compute Capability ................................................................................... 14

Chapter 3. Programming Interface ................................................................ 15

3.1 Compilation with NVCC ............................................................................. 15

3.1.1 Compilation Workflow ......................................................................... 16

3.1.1.1 Offline Compilation ...................................................................... 16

3.1.1.2 Just-in-Time Compilation .............................................................. 16

3.1.2 Binary Compatibility ........................................................................... 17

3.1.3 PTX Compatibility ............................................................................... 17

3.1.4 Application Compatibility ..................................................................... 17

3.1.5 C/C++ Compatibility .......................................................................... 18

3.1.6 64-Bit Compatibility ............................................................................ 18

3.2 CUDA C Runtime ...................................................................................... 19

3.2.1 Initialization ....................................................................................... 19

3.2.2 Device Memory .................................................................................. 20

3.2.3 Shared Memory ................................................................................. 22

3.2.4 Page-Locked Host Memory .................................................................. 28

3.2.4.1 Portable Memory ......................................................................... 29

3.2.4.2 Write-Combining Memory ............................................................. 29

iv CUDA C Programming Guide Version 4.2

3.2.4.3 Mapped Memory .......................................................................... 29

3.2.5 Asynchronous Concurrent Execution .................................................... 30

3.2.5.1 Concurrent Execution between Host and Device ............................. 30

3.2.5.2 Overlap of Data Transfer and Kernel Execution .............................. 30

3.2.5.3 Concurrent Kernel Execution ........................................................ 31

3.2.5.4 Concurrent Data Transfers ........................................................... 31

3.2.5.5 Streams ...................................................................................... 31

3.2.5.6 Events ........................................................................................ 34

3.2.5.7 Synchronous Calls ....................................................................... 34

3.2.6 Multi-Device System ........................................................................... 35

3.2.6.1 Device Enumeration ..................................................................... 35

3.2.6.2 Device Selection .......................................................................... 35

3.2.6.3 Stream and Event Behavior .......................................................... 35

3.2.6.4 Peer-to-Peer Memory Access ........................................................ 36

3.2.6.5 Peer-to-Peer Memory Copy ........................................................... 36

3.2.7 Unified Virtual Address Space .............................................................. 37

3.2.8 Error Checking ................................................................................... 37

3.2.9 Call Stack .......................................................................................... 38

3.2.10 Texture and Surface Memory .............................................................. 38

3.2.10.1 Texture Memory .......................................................................... 38

3.2.10.2 Surface Memory .......................................................................... 45

3.2.10.3 CUDA Arrays ............................................................................... 48

3.2.10.4 Read/Write Coherency ................................................................. 48

3.2.11 Graphics Interoperability ..................................................................... 48

3.2.11.1 OpenGL Interoperability ............................................................... 49

3.2.11.2 Direct3D Interoperability .............................................................. 51

3.2.11.3 SLI Interoperability ...................................................................... 58

3.3 Versioning and Compatibility...................................................................... 58

3.4 Compute Modes ....................................................................................... 59

3.5 Mode Switches ......................................................................................... 60

3.6 Tesla Compute Cluster Mode for Windows .................................................. 60

Chapter 4. Hardware Implementation ........................................................... 61

4.1 SIMT Architecture ..................................................................................... 61

CUDA C Programming Guide Version 4.2 v

4.2 Hardware Multithreading ........................................................................... 62

Chapter 5. Performance Guidelines ............................................................... 65

5.1 Overall Performance Optimization Strategies ............................................... 65

5.2 Maximize Utilization .................................................................................. 65

5.2.1 Application Level ................................................................................ 65

5.2.2 Device Level ...................................................................................... 66

5.2.3 Multiprocessor Level ........................................................................... 66

5.3 Maximize Memory Throughput ................................................................... 68

5.3.1 Data Transfer between Host and Device .............................................. 69

5.3.2 Device Memory Accesses .................................................................... 70

5.3.2.1 Global Memory ............................................................................ 70

5.3.2.2 Local Memory .............................................................................. 72

5.3.2.3 Shared Memory ........................................................................... 72

5.3.2.4 Constant Memory ........................................................................ 73

5.3.2.5 Texture and Surface Memory ........................................................ 73

5.4 Maximize Instruction Throughput ............................................................... 73

5.4.1 Arithmetic Instructions ....................................................................... 74

5.4.2 Control Flow Instructions .................................................................... 77

5.4.3 Synchronization Instruction ................................................................. 77

Appendix A. CUDA-Enabled GPUs .................................................................. 79

Appendix B. C Language Extensions .............................................................. 81

B.1 Function Type Qualifiers ............................................................................ 81

B.1.1 __device__ ........................................................................................ 81

B.1.2 __global__ ........................................................................................ 81

B.1.3 __host__ ........................................................................................... 81

B.1.4 __noinline__ and __forceinline__ ........................................................ 82

B.2 Variable Type Qualifiers ............................................................................ 82

B.2.1 __device__ ........................................................................................ 83

B.2.2 __constant__ ..................................................................................... 83

B.2.3 __shared__ ....................................................................................... 83

B.2.4 __restrict__ ....................................................................................... 84

B.3 Built-in Vector Types ................................................................................. 85

vi CUDA C Programming Guide Version 4.2

B.3.1 char1, uchar1, char2, uchar2, char3, uchar3, char4, uchar4, short1,

ushort1, short2, ushort2, short3, ushort3, short4, ushort4, int1, uint1, int2, uint2,

int3, uint3, int4, uint4, long1, ulong1, long2, ulong2, long3, ulong3, long4, ulong4,

longlong1, ulonglong1, longlong2, ulonglong2, float1, float2, float3, float4, double1,

double2 85

B.3.2 dim3 ................................................................................................. 86

B.4 Built-in Variables ...................................................................................... 86

B.4.1 gridDim ............................................................................................. 87

B.4.2 blockIdx ............................................................................................ 87

B.4.3 blockDim ........................................................................................... 87

B.4.4 threadIdx .......................................................................................... 87

B.4.5 warpSize ........................................................................................... 87

B.5 Memory Fence Functions ........................................................................... 87

B.6 Synchronization Functions ......................................................................... 89

B.7 Mathematical Functions ............................................................................. 89

B.8 Texture Functions ..................................................................................... 90

B.8.1 tex1Dfetch() ...................................................................................... 90

B.8.2 tex1D() ............................................................................................. 91

B.8.3 tex2D() ............................................................................................. 91

B.8.4 tex3D() ............................................................................................. 91

B.8.5 tex1DLayered() .................................................................................. 91

B.8.6 tex2DLayered() .................................................................................. 91

B.8.7 texCubemap() .................................................................................... 92

B.8.8 texCubemapLayered() ........................................................................ 92

B.8.9 tex2Dgather() .................................................................................... 92

B.9 Surface Functions ..................................................................................... 92

B.9.1 surf1Dread() ...................................................................................... 92

B.9.2 surf1Dwrite() ..................................................................................... 93

B.9.3 surf2Dread() ...................................................................................... 93

B.9.4 surf2Dwrite() ..................................................................................... 93

B.9.5 surf3Dread() ...................................................................................... 93

B.9.6 surf3Dwrite() ..................................................................................... 94

B.9.7 surf1DLayeredread() .......................................................................... 94

B.9.8 surf1DLayeredwrite() ......................................................................... 94

CUDA C Programming Guide Version 4.2 vii

B.9.9 surf2DLayeredread() .......................................................................... 94

B.9.10 surf2DLayeredwrite() ......................................................................... 95

B.9.11 surfCubemapread() ............................................................................ 95

B.9.12 surfCubemapwrite() ........................................................................... 95

B.9.13 surfCubemabLayeredread() ................................................................. 95

B.9.14 surfCubemapLayeredwrite() ................................................................ 96

B.10 Time Function .......................................................................................... 96

B.11 Atomic Functions ...................................................................................... 96

B.11.1 Arithmetic Functions ........................................................................... 97

B.11.1.1 atomicAdd() ................................................................................ 97

B.11.1.2 atomicSub() ................................................................................ 97

B.11.1.3 atomicExch() ............................................................................... 98

B.11.1.4 atomicMin() ................................................................................ 98

B.11.1.5 atomicMax() ................................................................................ 98

B.11.1.6 atomicInc() ................................................................................. 98

B.11.1.7 atomicDec() ................................................................................ 98

B.11.1.8 atomicCAS() ................................................................................ 99

B.11.2 Bitwise Functions ............................................................................... 99

B.11.2.1 atomicAnd() ................................................................................ 99

B.11.2.2 atomicOr() .................................................................................. 99

B.11.2.3 atomicXor() ................................................................................. 99

B.12 Warp Vote Functions ............................................................................... 100

B.13 Warp Shuffle Functions ........................................................................... 100

B.13.1 Synopsys ......................................................................................... 100

B.13.2 Description ...................................................................................... 100

B.13.3 Return Value ................................................................................... 101

B.13.4 Notes .............................................................................................. 101

B.13.5 Examples ........................................................................................ 102

B.13.5.1 Broadcast of a single value across a warp .................................... 102

B.13.5.2 Inclusive plus-scan across sub-partitions of 8 threads ................... 102

B.13.5.3 Reduction across a warp ............................................................ 103

B.14 Profiler Counter Function ......................................................................... 103

B.15 Assertion ............................................................................................... 103

viii CUDA C Programming Guide Version 4.2

B.16 Formatted Output ................................................................................... 104

B.16.1 Format Specifiers ............................................................................. 105

B.16.2 Limitations ...................................................................................... 105

B.16.3 Associated Host-Side API .................................................................. 106

B.16.4 Examples ........................................................................................ 106

B.17 Dynamic Global Memory Allocation ........................................................... 108

B.17.1 Heap Memory Allocation ................................................................... 108

B.17.2 Interoperability with Host Memory API ............................................... 109

B.17.3 Examples ........................................................................................ 109

B.17.3.1 Per Thread Allocation ................................................................. 109

B.17.3.2 Per Thread Block Allocation ........................................................ 109

B.17.3.3 Allocation Persisting Between Kernel Launches ............................. 110

B.18 Execution Configuration .......................................................................... 111

B.19 Launch Bounds ....................................................................................... 112

B.20 #pragma unroll ...................................................................................... 114

Appendix C. Mathematical Functions ........................................................... 115

C.1 Standard Functions ................................................................................. 115

C.1.1 Single-Precision Floating-Point Functions ............................................ 115

C.1.2 Double-Precision Floating-Point Functions .......................................... 118

C.2 Intrinsic Functions .................................................................................. 120

C.2.1 Single-Precision Floating-Point Functions ............................................ 121

C.2.2 Double-Precision Floating-Point Functions .......................................... 122

Appendix D. C/C++ Language Support ....................................................... 123

D.1 Code Samples ........................................................................................ 123

D.1.1 Data Aggregation Class .................................................................... 123

D.1.2 Derived Class ................................................................................... 124

D.1.3 Class Template ................................................................................ 124

D.1.4 Function Template ........................................................................... 125

D.1.5 Functor Class ................................................................................... 125

D.2 Restrictions ............................................................................................ 126

D.2.1 Qualifiers ......................................................................................... 126

D.2.1.1 Device Memory Qualifiers ........................................................... 126

D.2.1.2 Volatile Qualifier ........................................................................ 126

CUDA C Programming Guide Version 4.2 ix

D.2.2 Pointers .......................................................................................... 127

D.2.3 Operators ........................................................................................ 127

D.2.3.1 Assignment Operator ................................................................. 127

D.2.3.2 Address Operator ...................................................................... 127

D.2.4 Functions ........................................................................................ 127

D.2.4.1 Function Parameters .................................................................. 127

D.2.4.2 Static Variables within Function .................................................. 128

D.2.4.3 Function Pointers ....................................................................... 128

D.2.4.4 Function Recursion .................................................................... 128

D.2.5 Classes ............................................................................................ 128

D.2.5.1 Data Members ........................................................................... 128

D.2.5.2 Function Members ..................................................................... 128

D.2.5.3 Constructors and Destructors ..................................................... 128

D.2.5.4 Virtual Functions ....................................................................... 128

D.2.5.5 Virtual Base Classes ................................................................... 128

D.2.5.6 Windows-Specific ...................................................................... 128

D.2.6 Templates ....................................................................................... 129

Appendix E. Texture Fetching ...................................................................... 131

E.1 Nearest-Point Sampling ........................................................................... 132

E.2 Linear Filtering ....................................................................................... 132

E.3 Table Lookup ......................................................................................... 134

Appendix F. Compute Capabilities ............................................................... 135

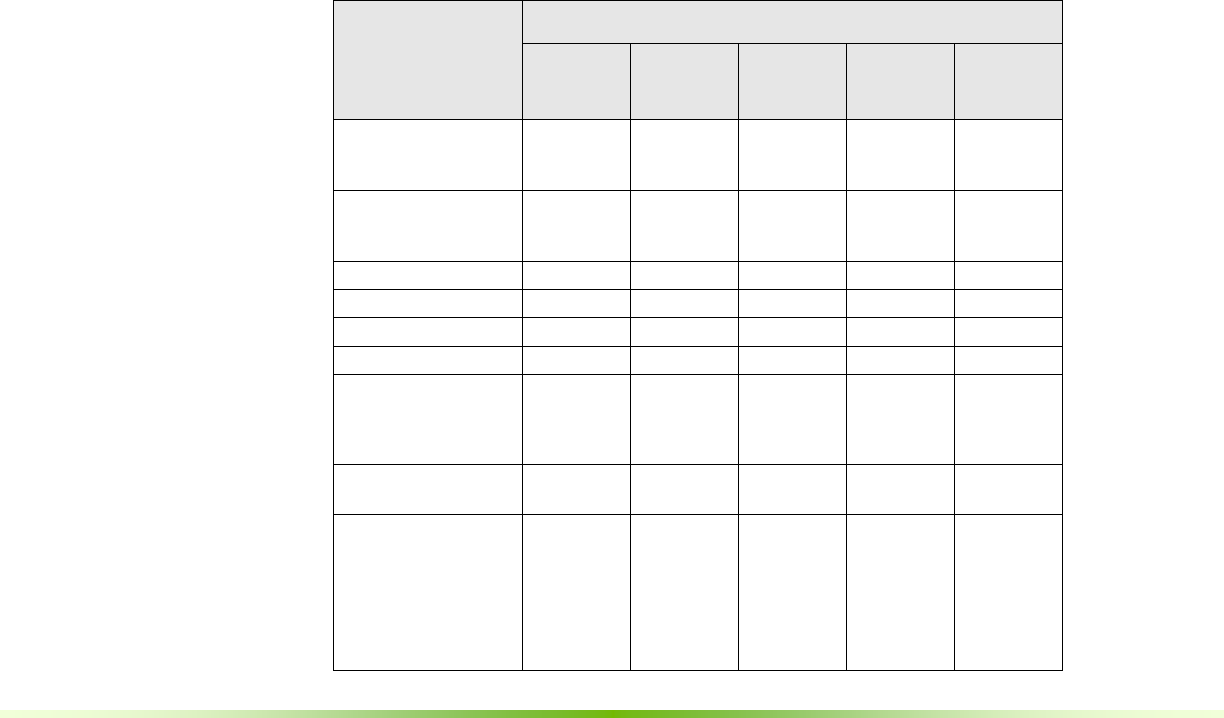

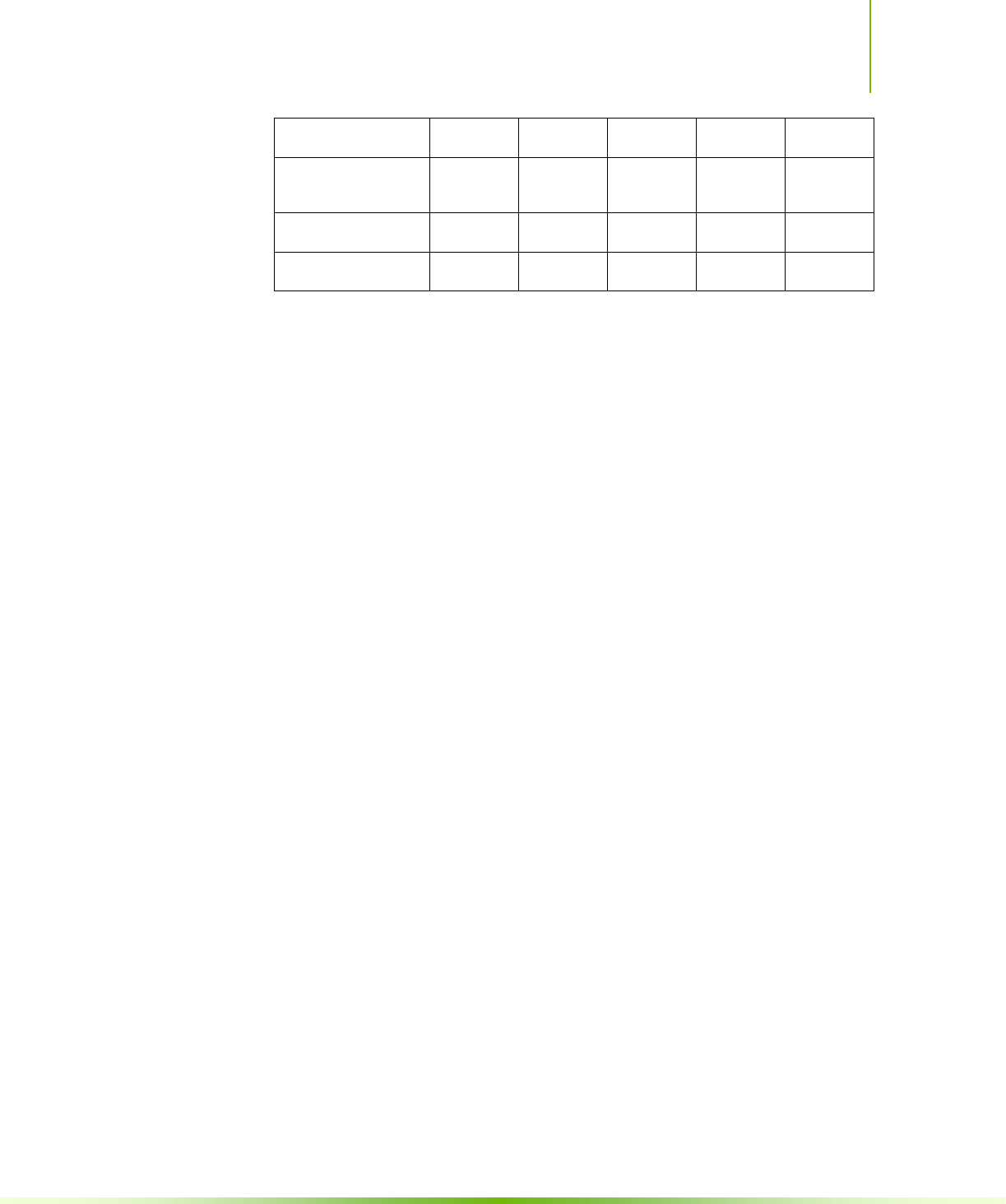

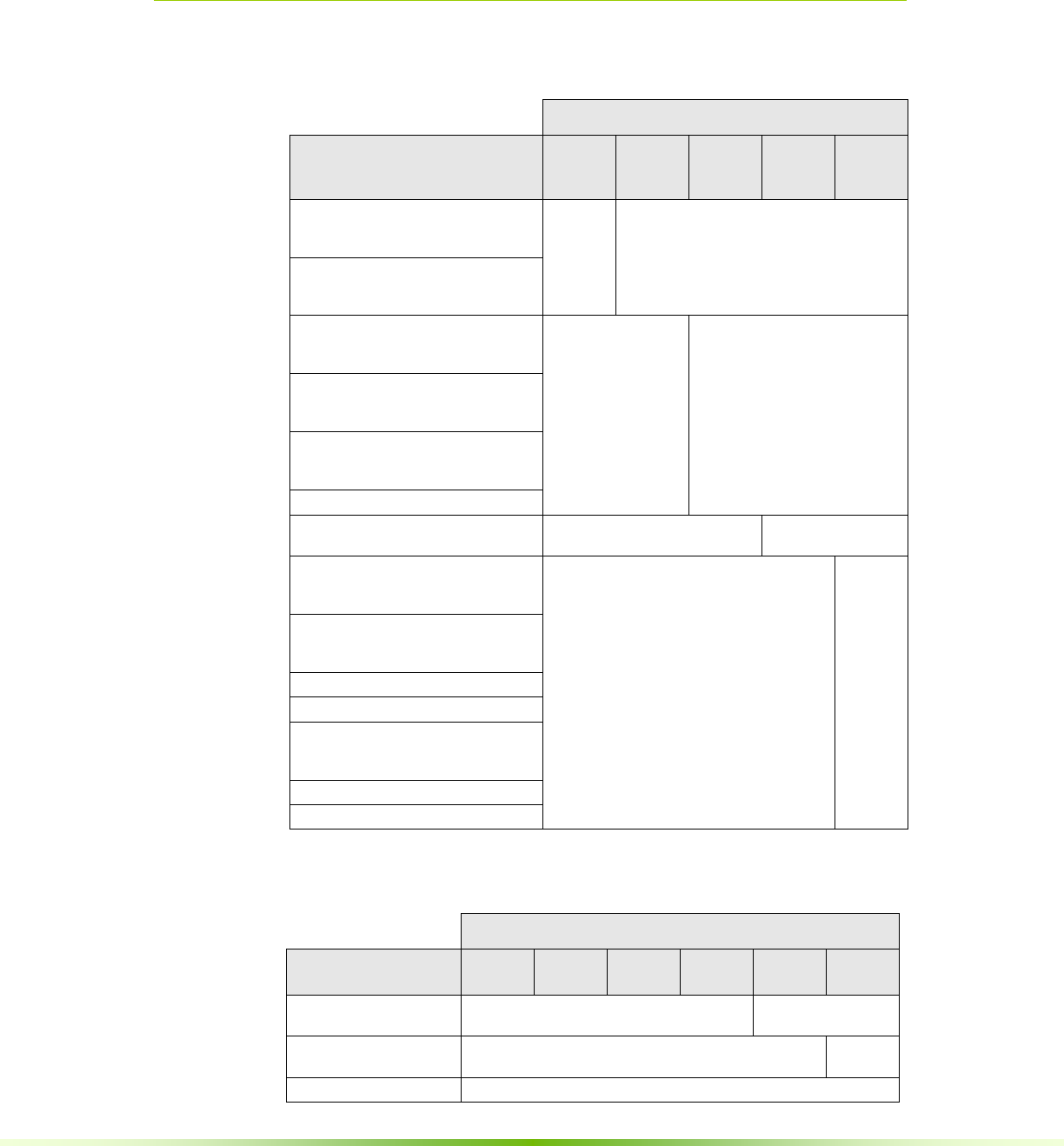

F.1 Features and Technical Specifications ....................................................... 136

F.2 Floating-Point Standard ........................................................................... 139

F.3 Compute Capability 1.x ........................................................................... 141

F.3.1 Architecture ..................................................................................... 141

F.3.2 Global Memory ................................................................................ 141

F.3.2.1 Devices of Compute Capability 1.0 and 1.1 .................................. 142

F.3.2.2 Devices of Compute Capability 1.2 and 1.3 .................................. 142

F.3.3 Shared Memory ............................................................................... 143

F.3.3.1 32-Bit Strided Access ................................................................. 143

F.3.3.2 32-Bit Broadcast Access ............................................................. 143

F.3.3.3 8-Bit and 16-Bit Access .............................................................. 144

x CUDA C Programming Guide Version 4.2

F.3.3.4 Larger Than 32-Bit Access .......................................................... 144

F.4 Compute Capability 2.x ........................................................................... 145

F.4.1 Architecture ..................................................................................... 145

F.4.2 Global Memory ................................................................................ 146

F.4.3 Shared Memory ............................................................................... 147

F.4.3.1 32-Bit Strided Access ................................................................. 147

F.4.3.2 Larger Than 32-Bit Access .......................................................... 148

F.4.4 Constant Memory ............................................................................. 148

F.5 Compute Capability 3.0 ........................................................................... 149

F.5.1 Architecture ..................................................................................... 149

F.5.2 Global Memory ................................................................................ 150

F.5.3 Shared Memory ............................................................................... 152

F.5.3.1 64-Bit Mode .............................................................................. 152

F.5.3.2 32-Bit Mode .............................................................................. 152

Appendix G. Driver API ................................................................................ 155

G.1 Context.................................................................................................. 157

G.2 Module .................................................................................................. 158

G.3 Kernel Execution..................................................................................... 158

G.4 Interoperability between Runtime and Driver APIs ..................................... 160

CUDA C Programming Guide Version 4.2 xi

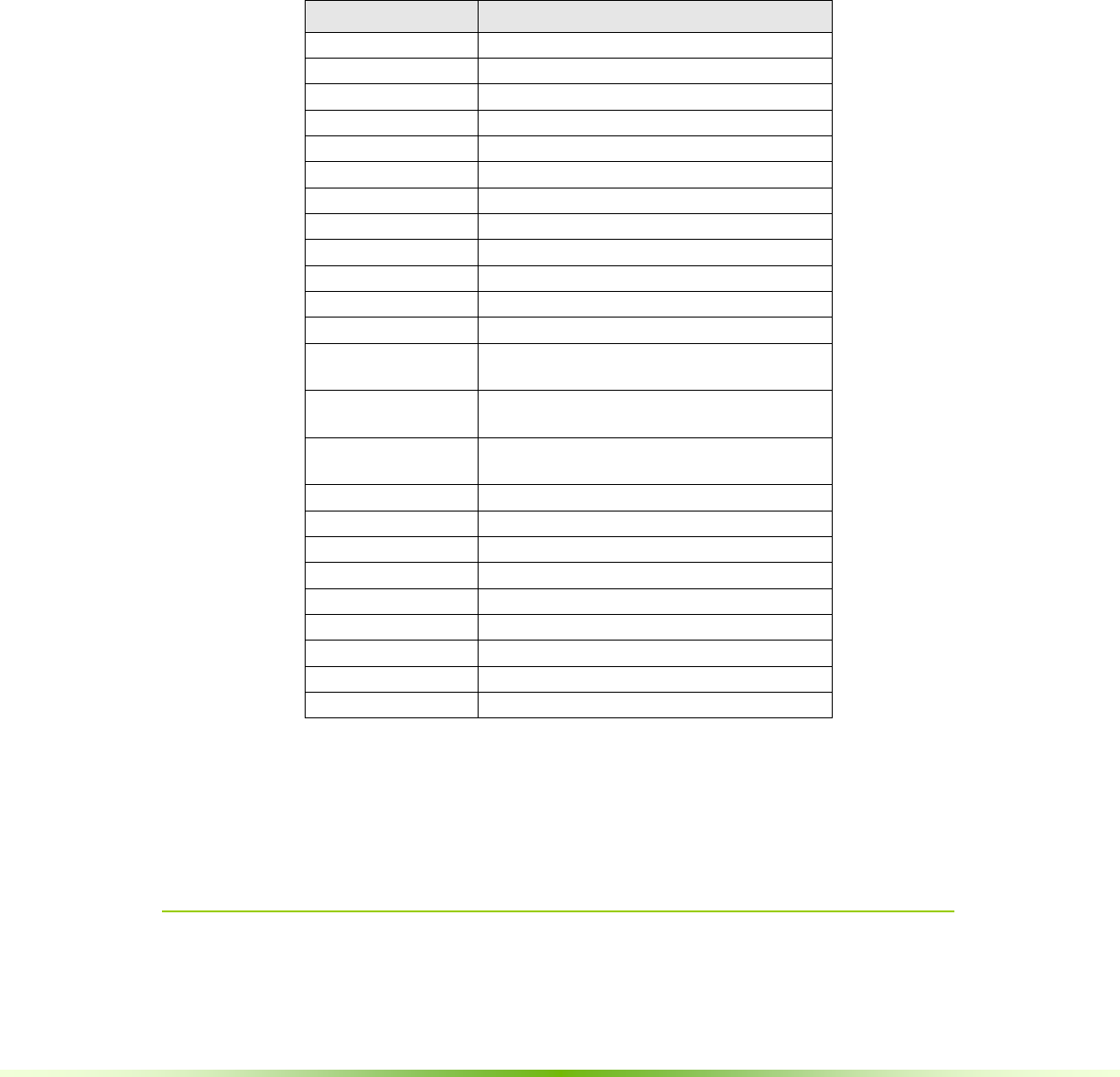

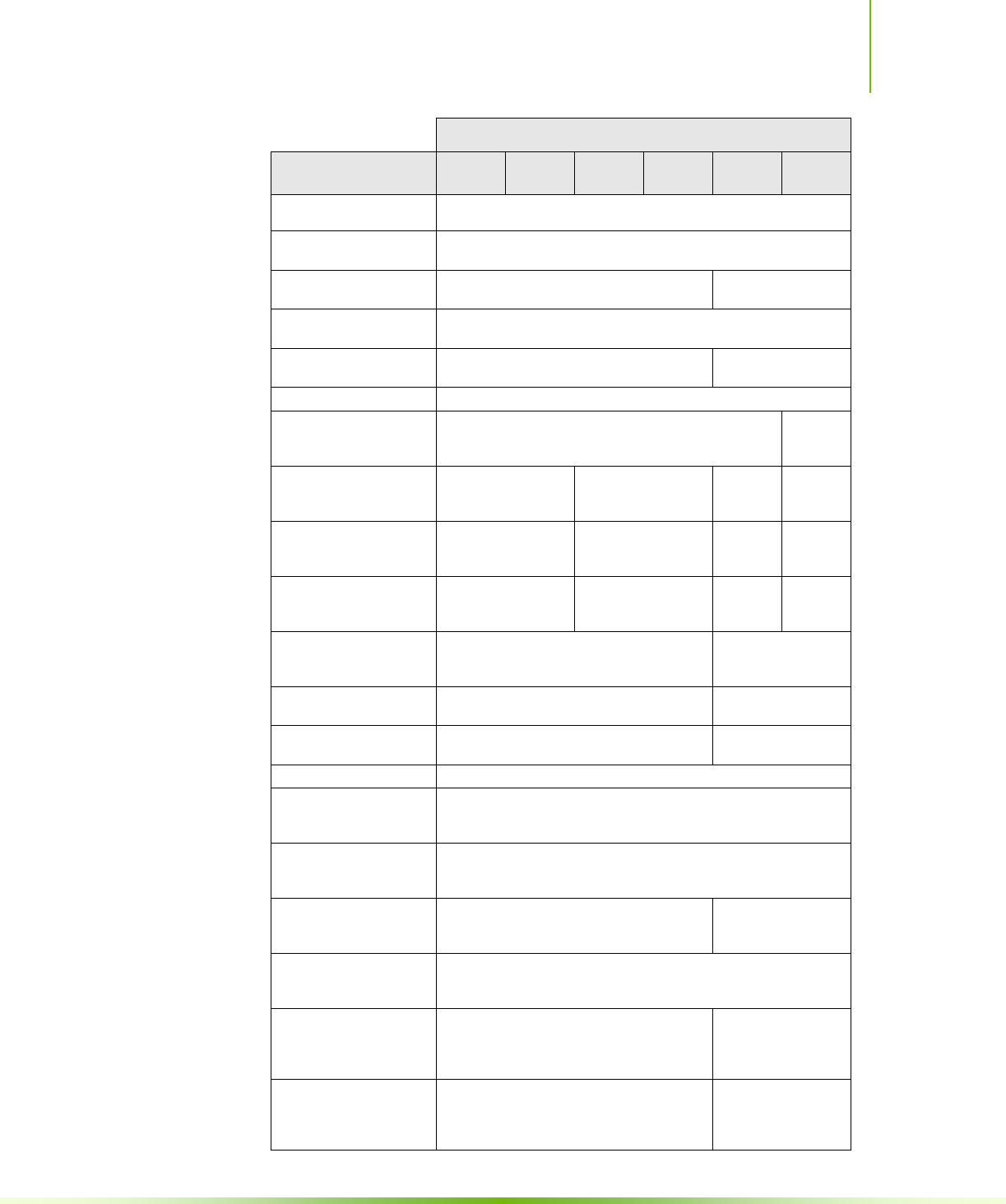

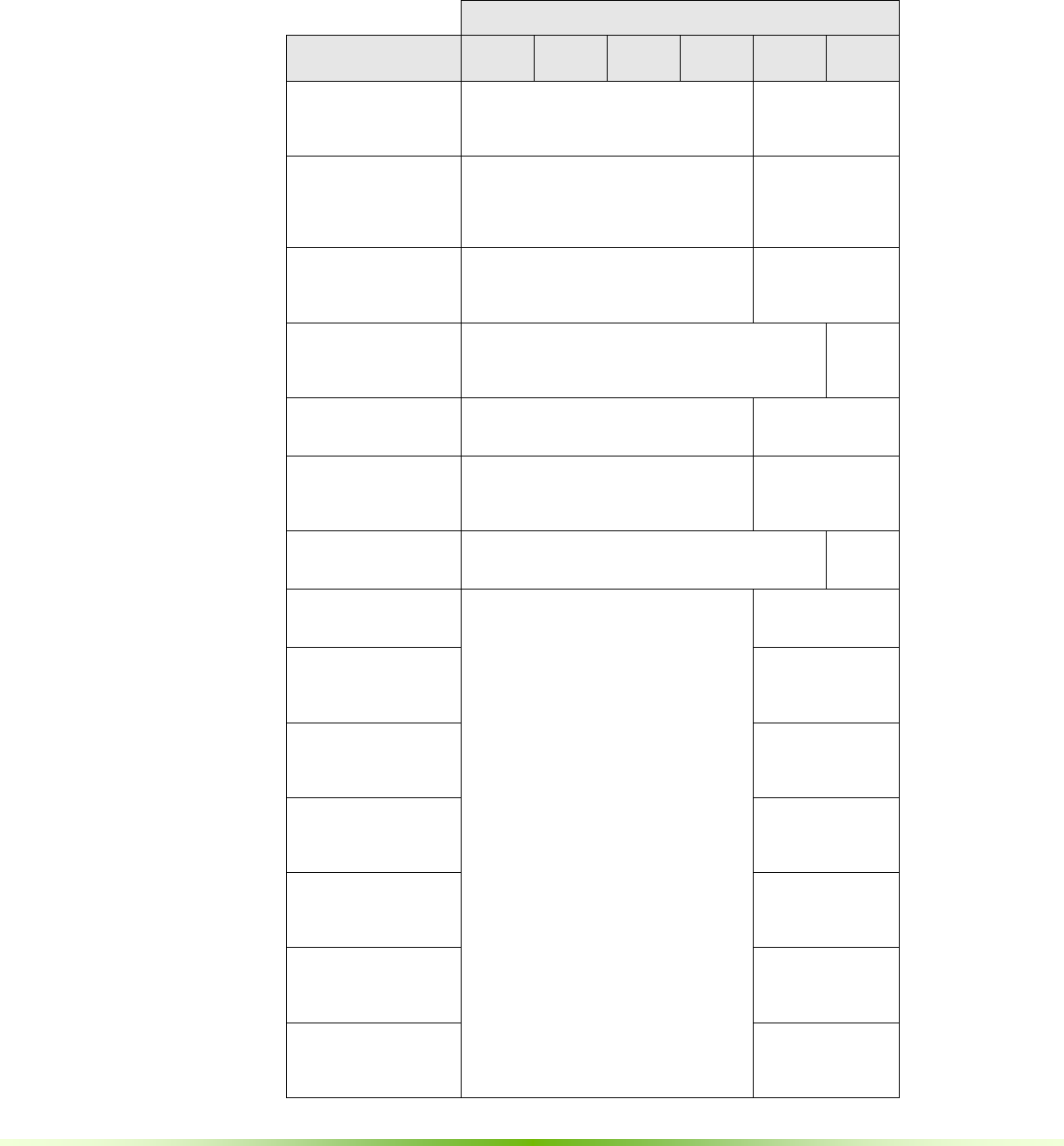

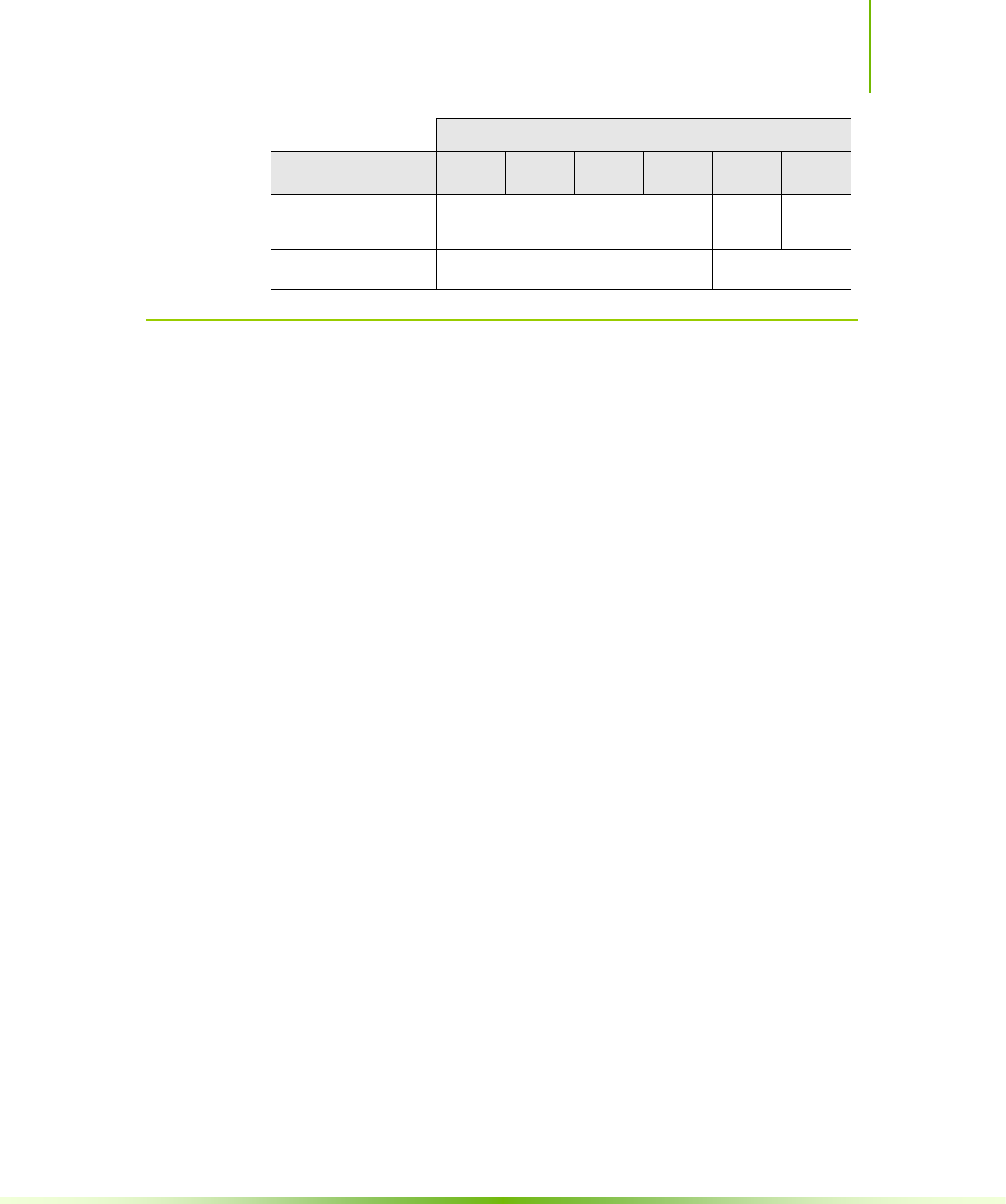

List of Figures

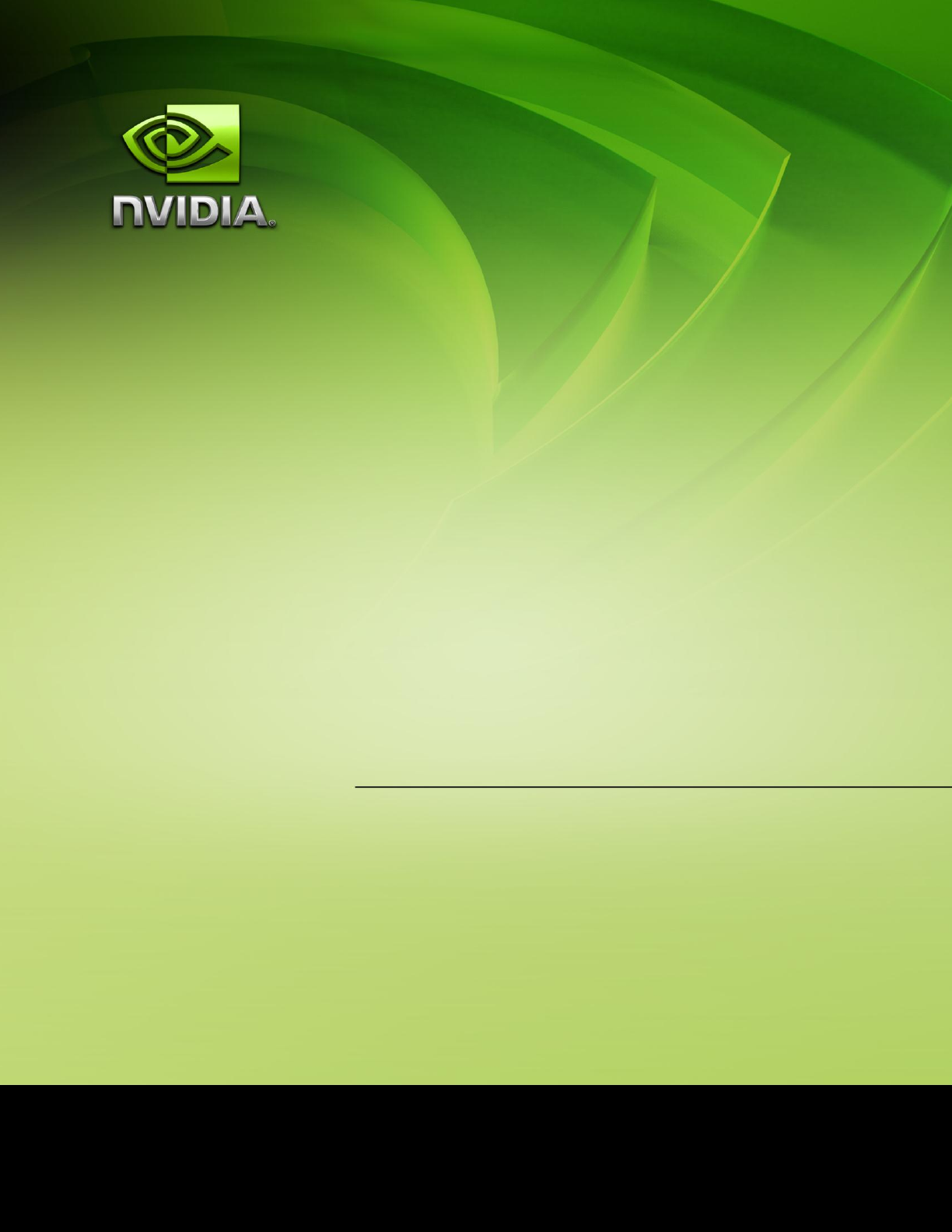

Figure 1-1. Floating-Point Operations per Second and Memory Bandwidth for the CPU

and GPU 2

Figure 1-2. The GPU Devotes More Transistors to Data Processing ............................ 3

Figure 1-3. CUDA is Designed to Support Various Languages and Application

Programming Interfaces .................................................................................... 4

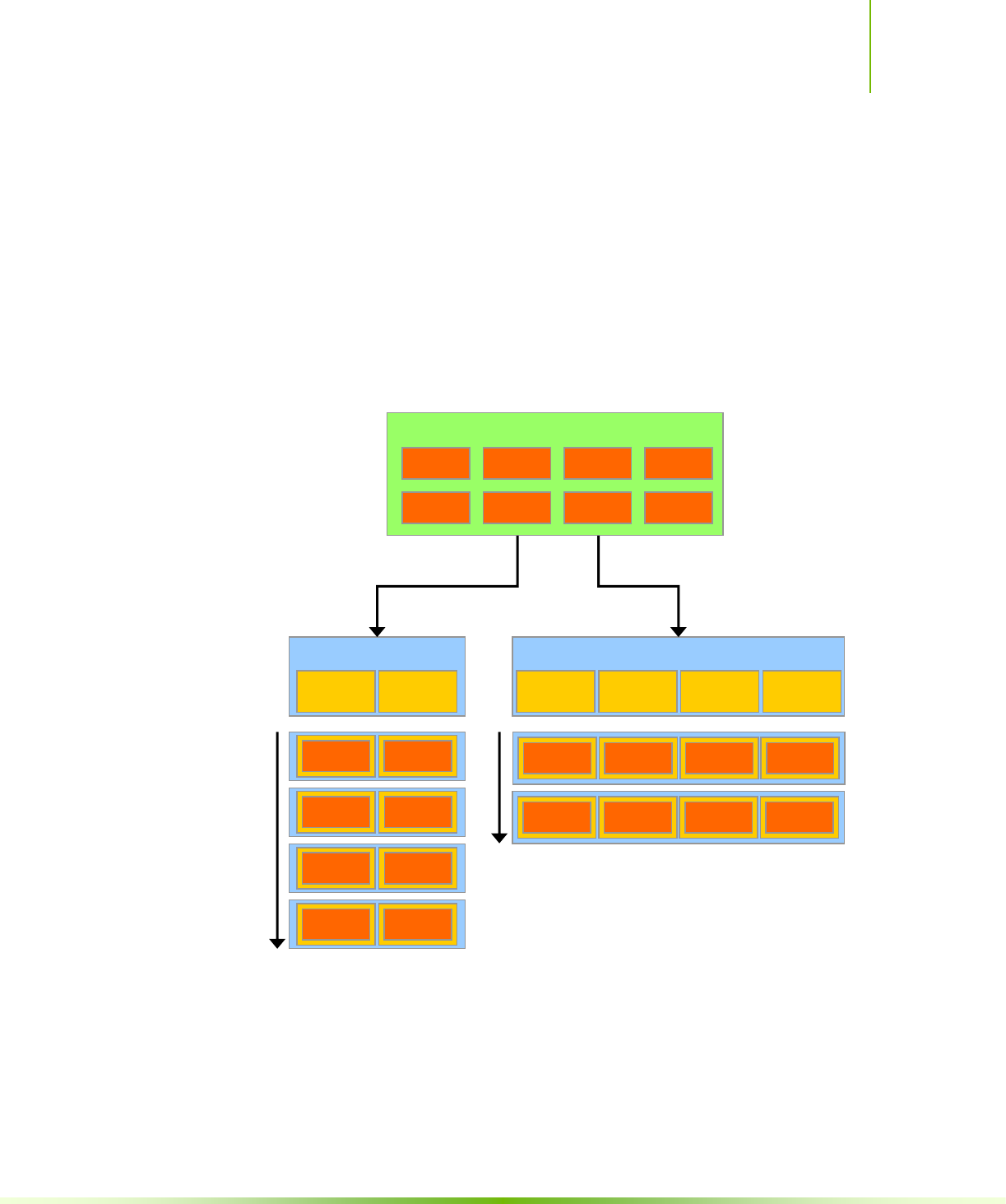

Figure 1-4. Automatic Scalability ............................................................................ 5

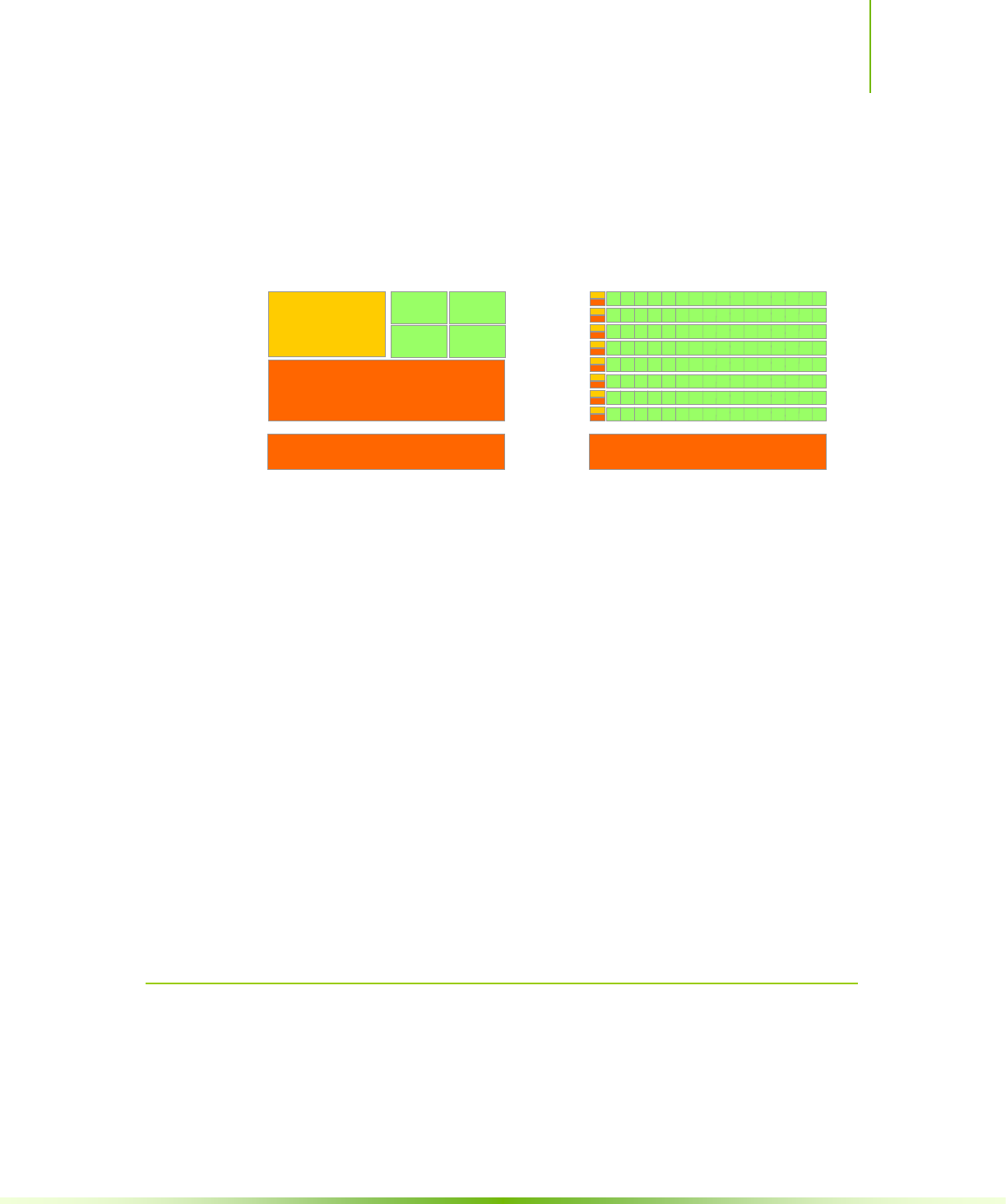

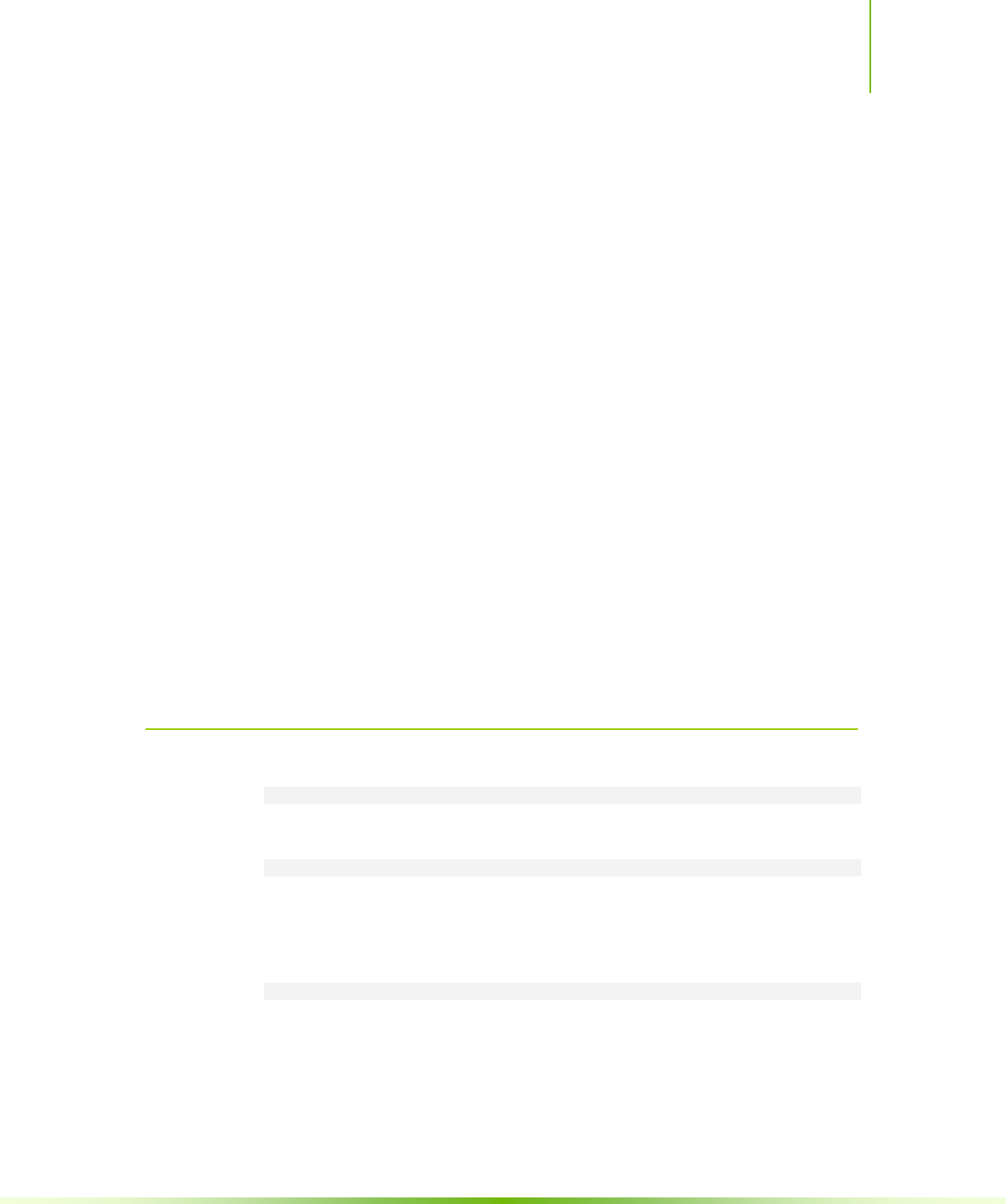

Figure 2-1. Grid of Thread Blocks ........................................................................... 9

Figure 2-2. Memory Hierarchy .............................................................................. 11

Figure 2-3. Heterogeneous Programming .............................................................. 13

Figure 3-1. Matrix Multiplication without Shared Memory ........................................ 24

Figure 3-2. Matrix Multiplication with Shared Memory ............................................ 28

Figure 3-3. The Driver API is Backward, but Not Forward Compatible ...................... 59

Figure E-1. Nearest-Point Sampling of a One-Dimensional Texture of Four Texels .. 132

Figure E-2. Linear Filtering of a One-Dimensional Texture of Four Texels in Clamp

Addressing Mode ........................................................................................... 133

Figure E-3. One-Dimensional Table Lookup Using Linear Filtering .......................... 134

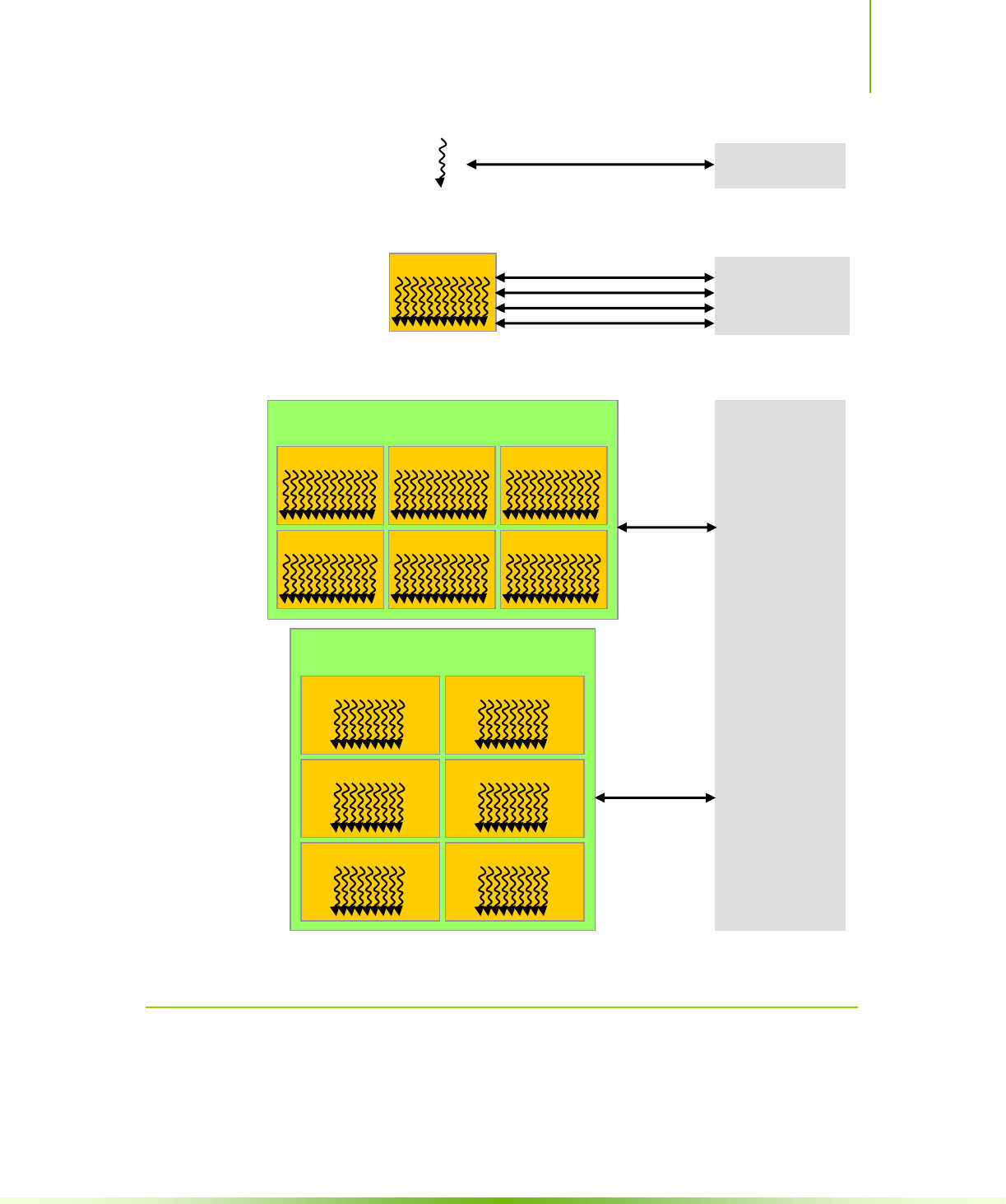

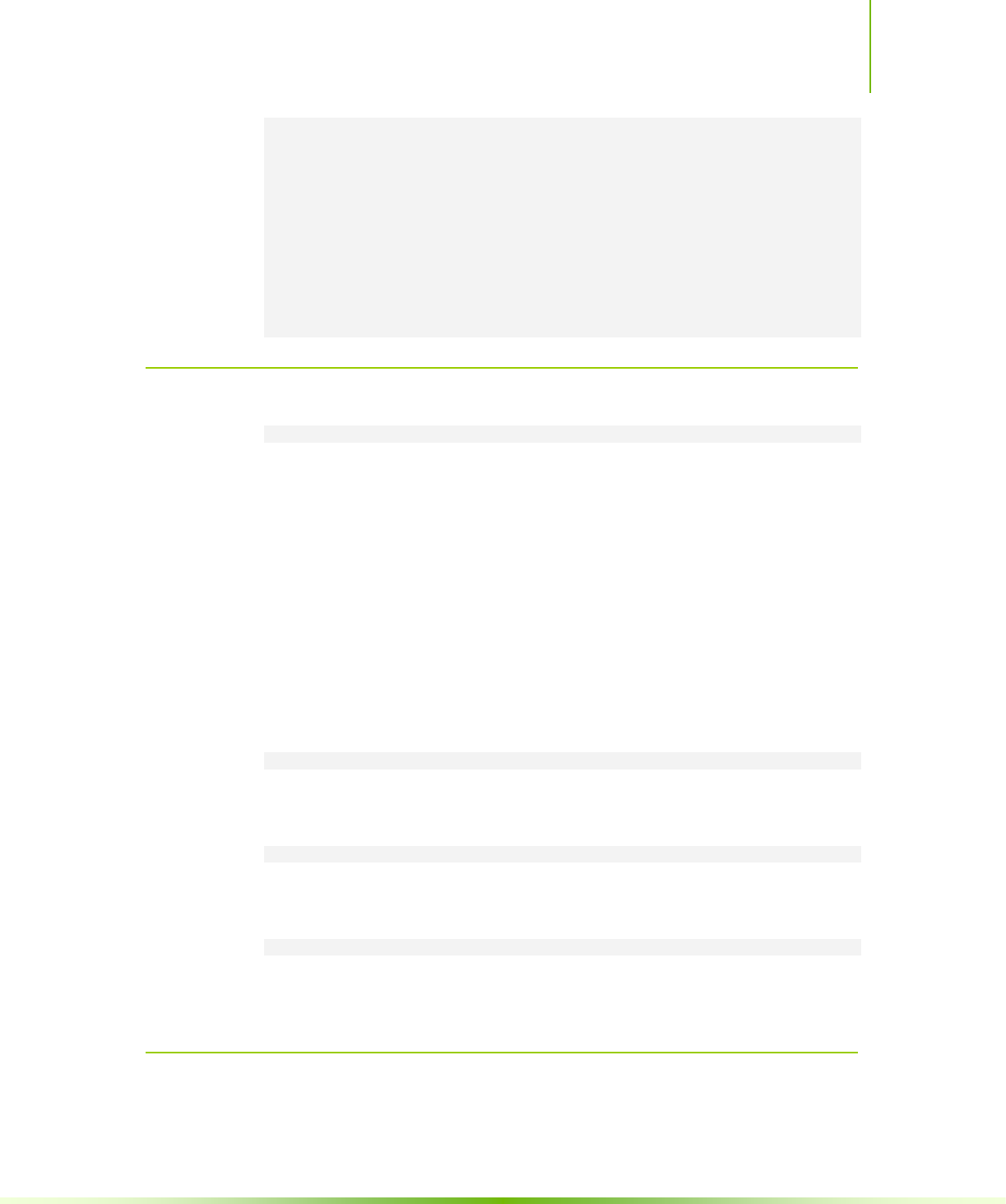

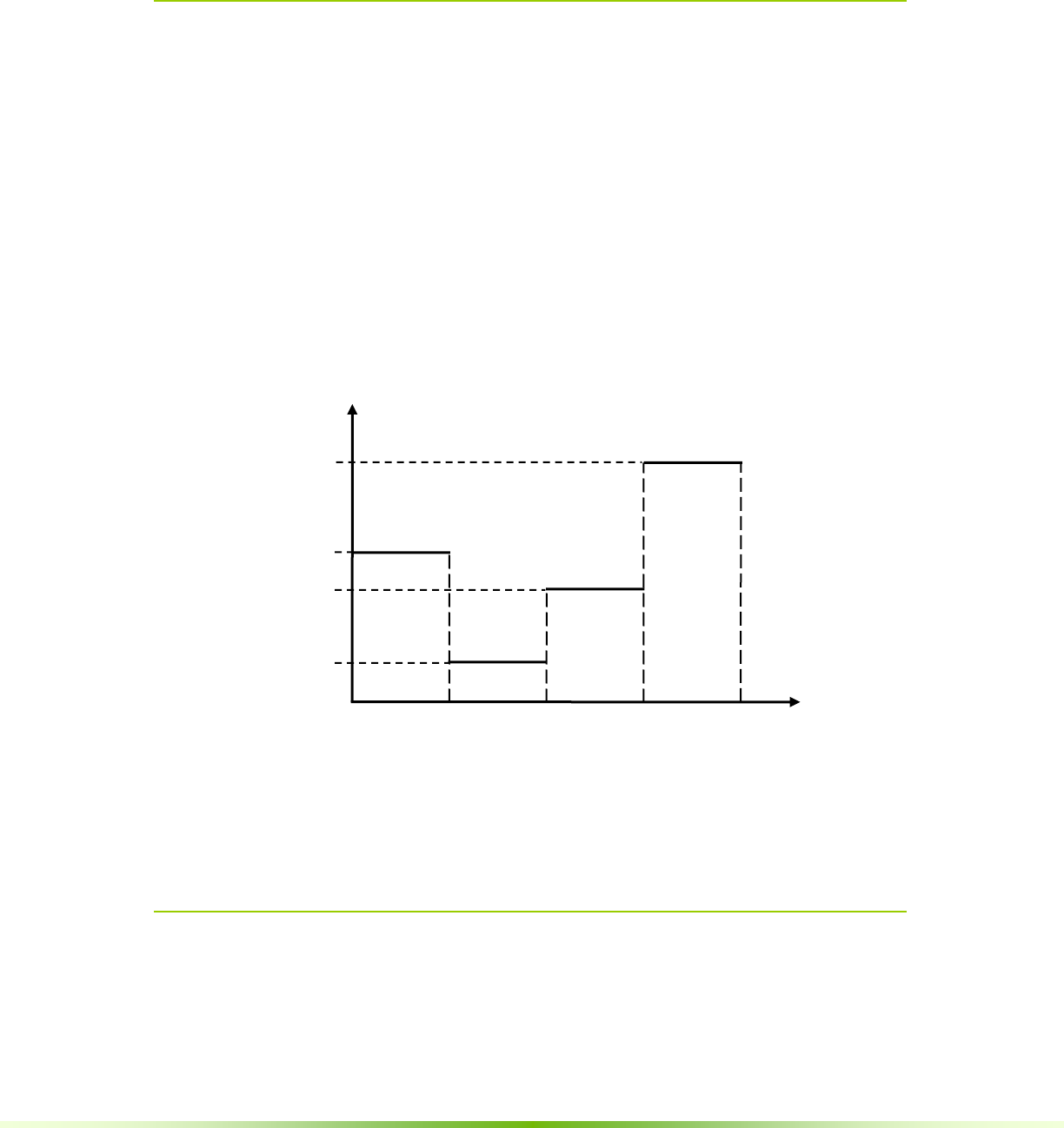

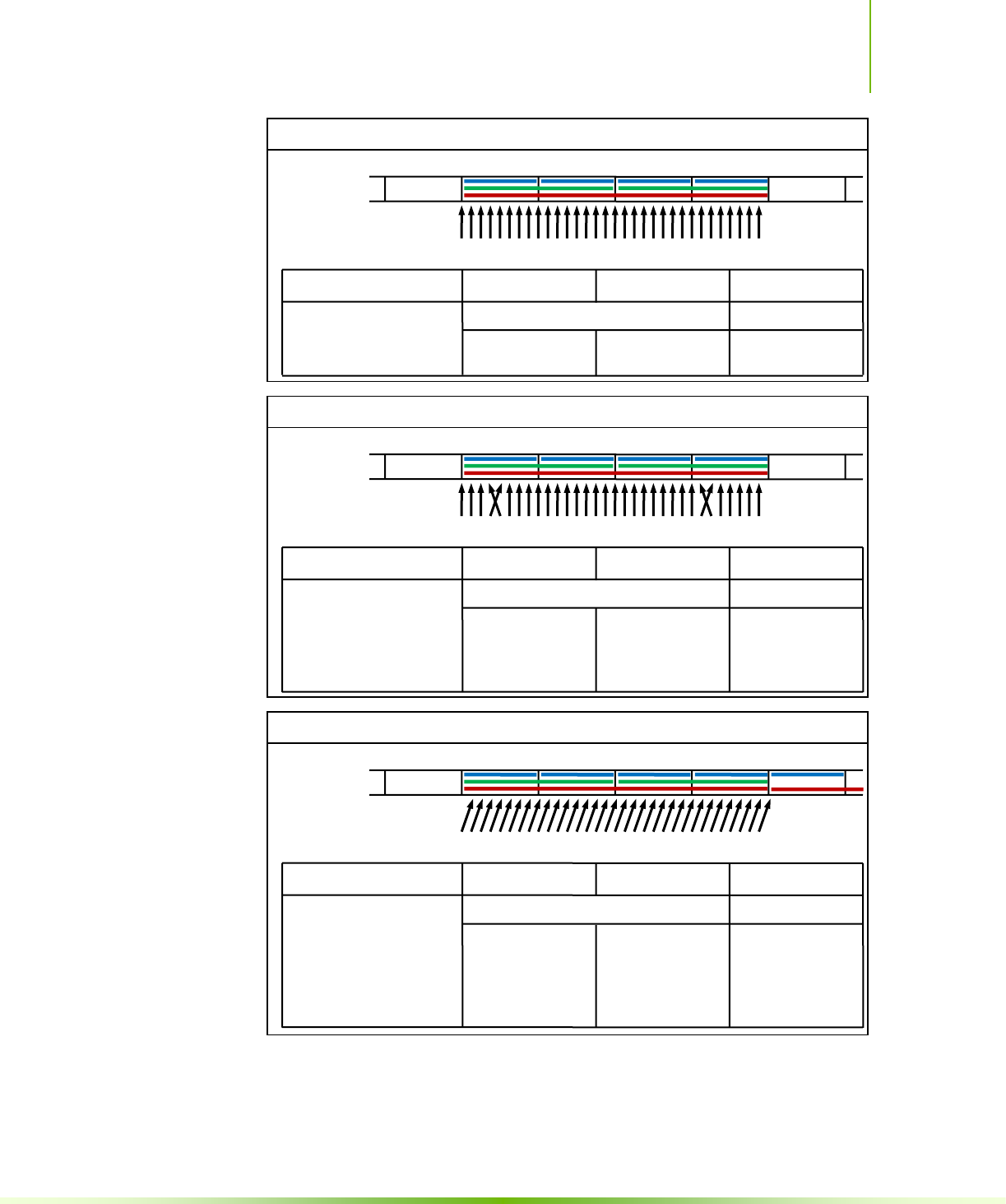

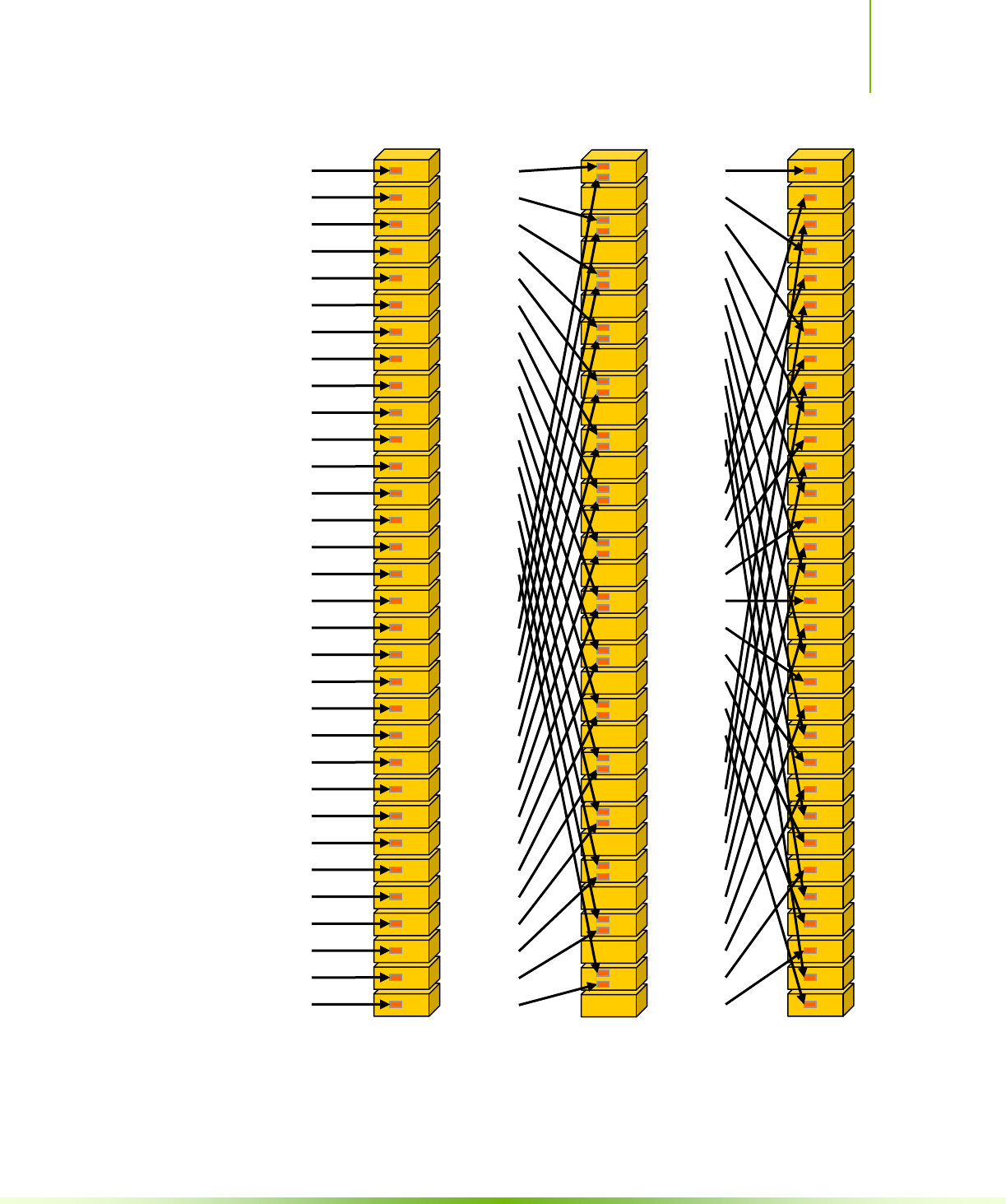

Figure F-1. Examples of Global Memory Accesses by a Warp, 4-Byte Word per Thread,

and Associated Memory Transactions Based on Compute Capability .................. 151

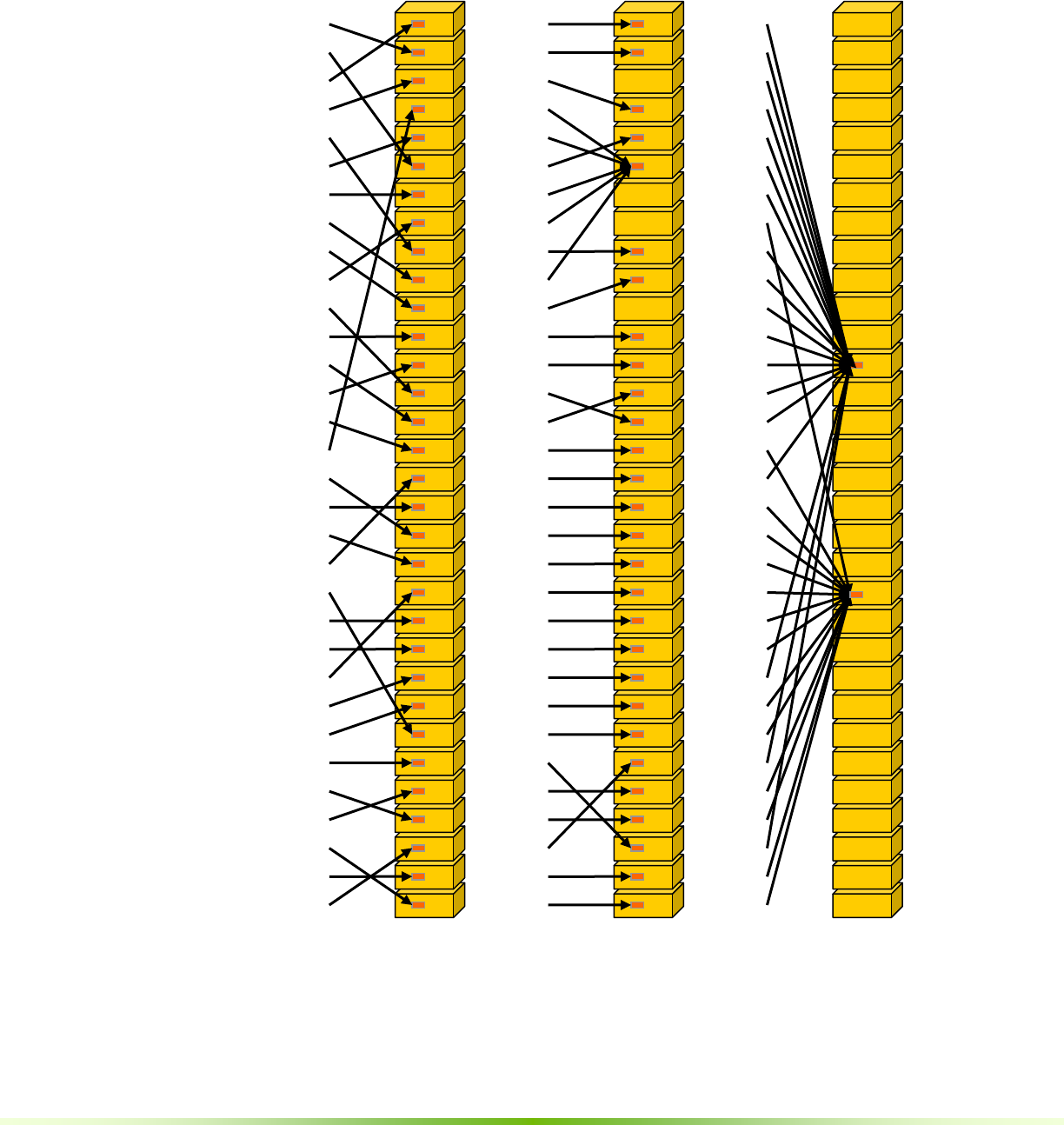

Figure F-2 Examples of Strided Shared Memory Accesses for Devices of Compute

Capability 3.0 ................................................................................................ 153

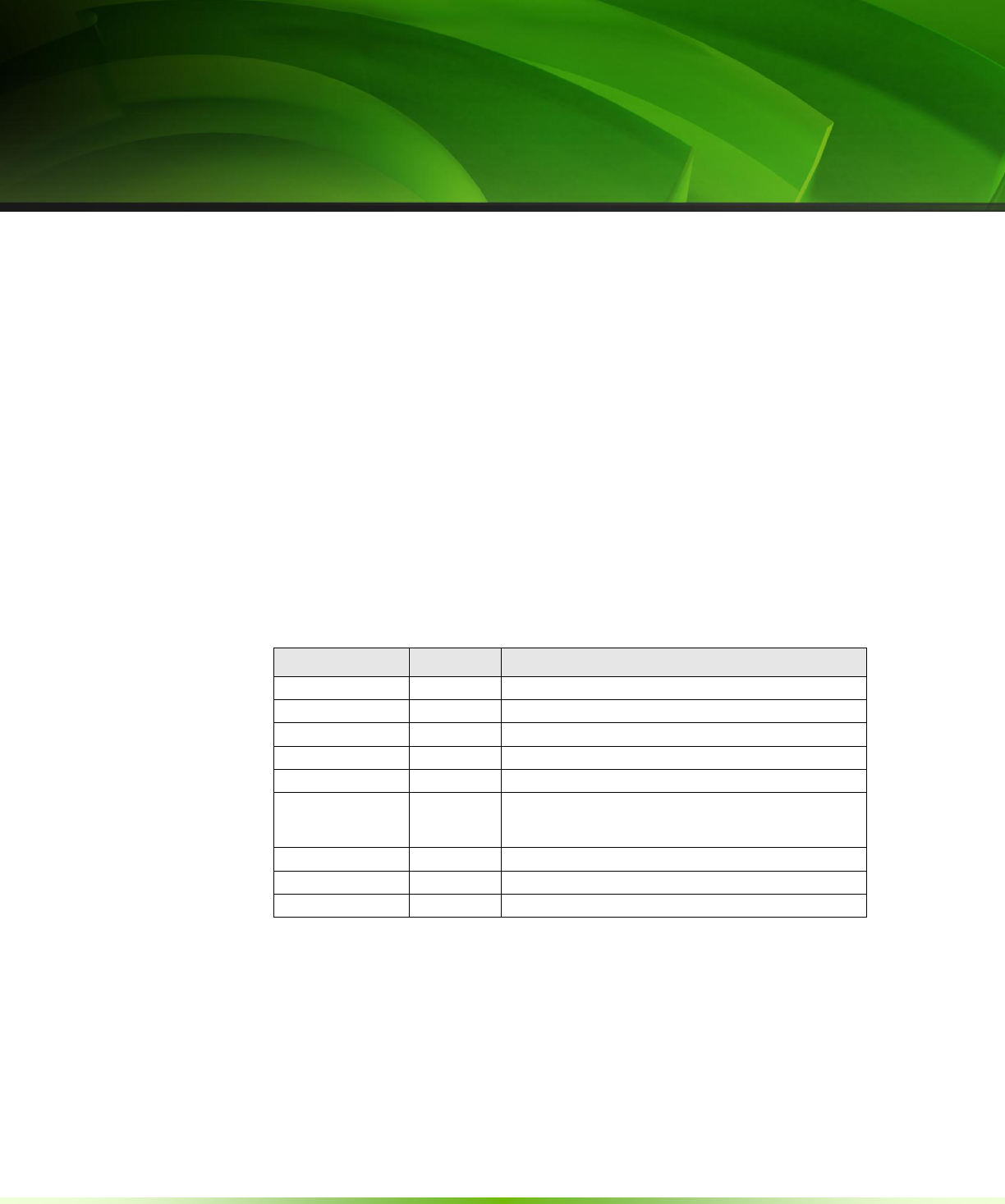

Figure F-3 Examples of Irregular Shared Memory Accesses for Devices of Compute

Capability 3.0 ................................................................................................ 154

Figure G-1 Library Context Management ............................................................ 158

CUDA C Programming Guide Version 4.2 1

Chapter 1.

Introduction

1.1 From Graphics Processing to

General-Purpose Parallel Computing

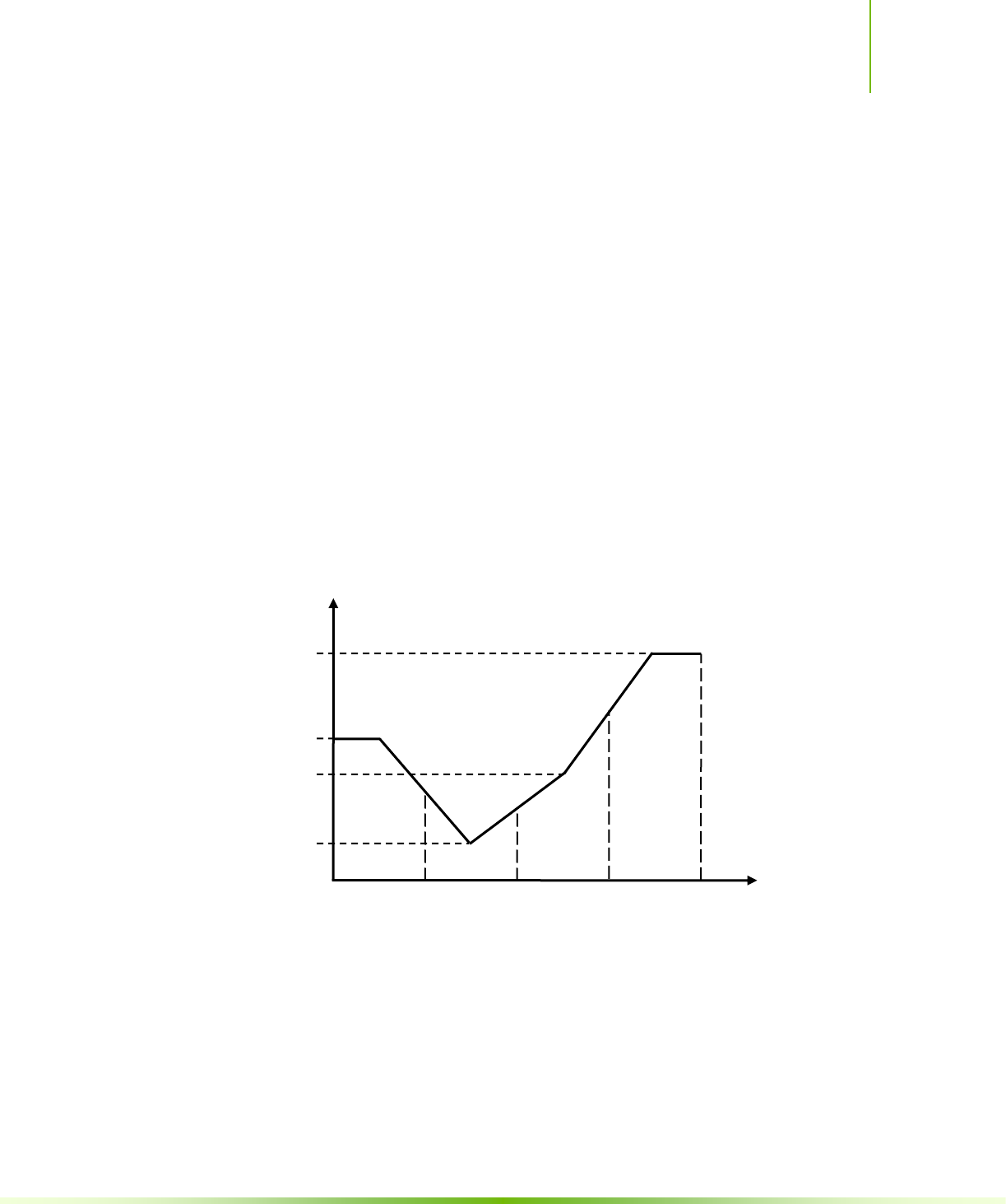

Driven by the insatiable market demand for realtime, high-definition 3D graphics,

the programmable Graphic Processor Unit or GPU has evolved into a highly

parallel, multithreaded, manycore processor with tremendous computational

horsepower and very high memory bandwidth, as illustrated by Figure 1-1.

Chapter 1. Introduction

2 CUDA C Programming Guide Version 4.2

Figure 1-1. Floating-Point Operations per Second and

Memory Bandwidth for the CPU and GPU

Chapter 1. Introduction

CUDA C Programming Guide Version 4.2 3

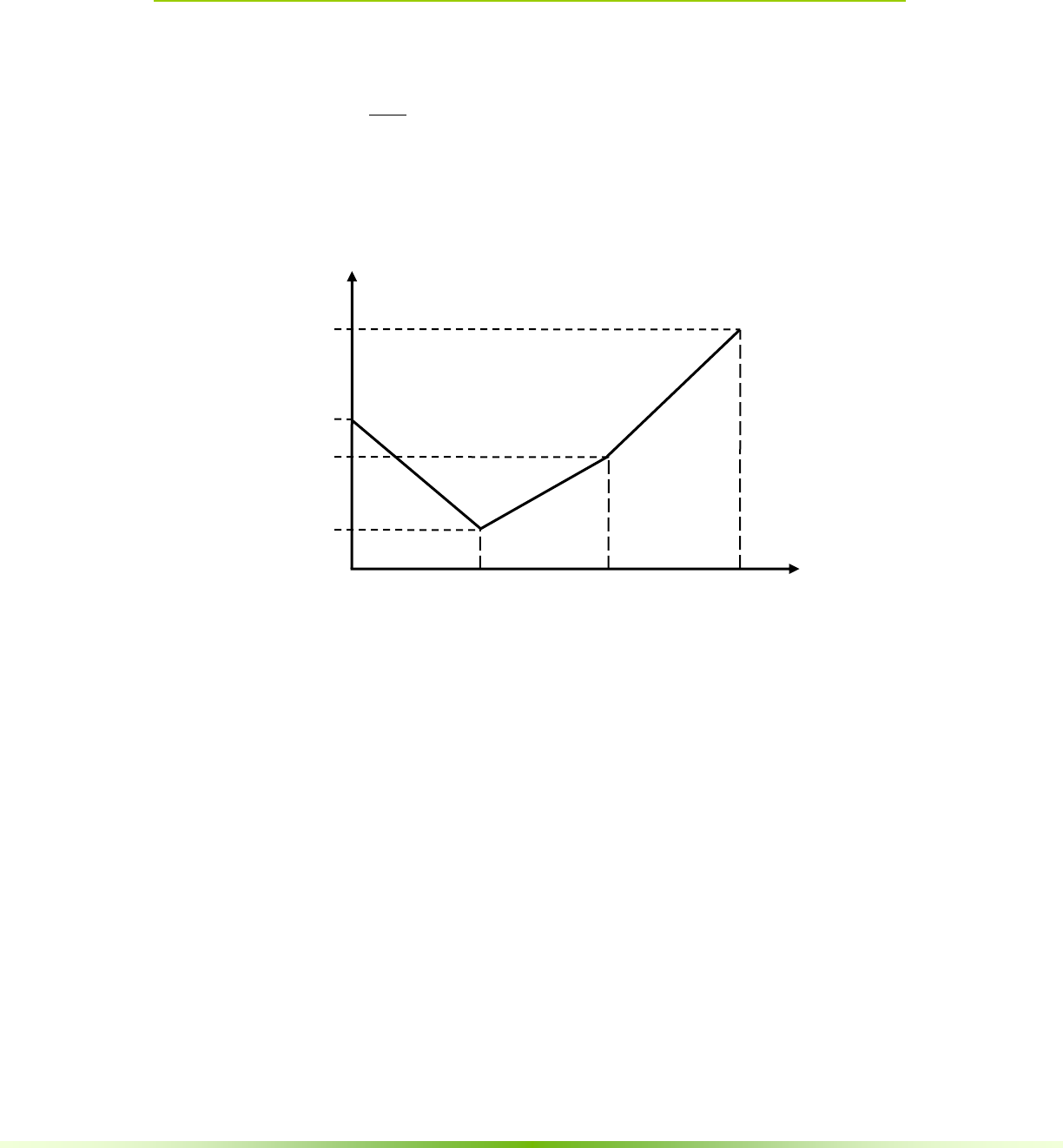

The reason behind the discrepancy in floating-point capability between the CPU and

the GPU is that the GPU is specialized for compute-intensive, highly parallel

computation – exactly what graphics rendering is about – and therefore designed

such that more transistors are devoted to data processing rather than data caching

and flow control, as schematically illustrated by Figure 1-2.

Figure 1-2. The GPU Devotes More Transistors to Data

Processing

More specifically, the GPU is especially well-suited to address problems that can be

expressed as data-parallel computations – the same program is executed on many

data elements in parallel – with high arithmetic intensity – the ratio of arithmetic

operations to memory operations. Because the same program is executed for each

data element, there is a lower requirement for sophisticated flow control, and

because it is executed on many data elements and has high arithmetic intensity, the

memory access latency can be hidden with calculations instead of big data caches.

Data-parallel processing maps data elements to parallel processing threads. Many

applications that process large data sets can use a data-parallel programming model

to speed up the computations. In 3D rendering, large sets of pixels and vertices are

mapped to parallel threads. Similarly, image and media processing applications such

as post-processing of rendered images, video encoding and decoding, image scaling,

stereo vision, and pattern recognition can map image blocks and pixels to parallel

processing threads. In fact, many algorithms outside the field of image rendering

and processing are accelerated by data-parallel processing, from general signal

processing or physics simulation to computational finance or computational biology.

1.2 CUDA™: a General-Purpose Parallel

Computing Architecture

In November 2006, NVIDIA introduced CUDA™, a general purpose parallel

computing architecture – with a new parallel programming model and instruction

set architecture – that leverages the parallel compute engine in NVIDIA GPUs to

Cache

ALU

Control

ALU

ALU

ALU

DRAM

CPU

DRAM

GPU

Chapter 1. Introduction

4 CUDA C Programming Guide Version 4.2

solve many complex computational problems in a more efficient way than on a

CPU.

CUDA comes with a software environment that allows developers to use C as a

high-level programming language. As illustrated by Figure 1-3, other languages,

application programming interfaces, or directives-based approaches are supported,

such as FORTRAN, DirectCompute, OpenCL, OpenACC.

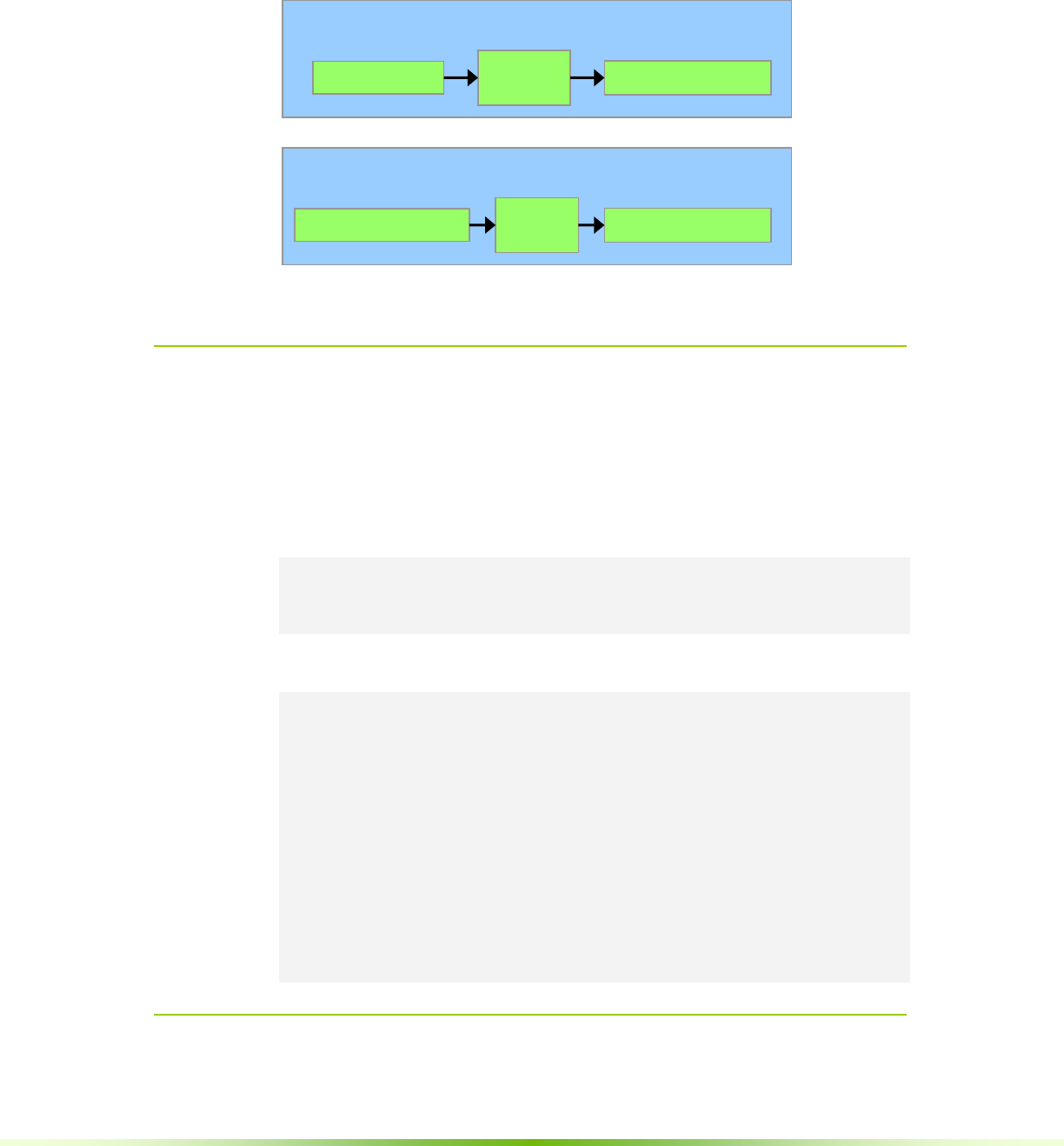

Figure 1-3. CUDA is Designed to Support Various Languages

and Application Programming Interfaces

1.3 A Scalable Programming Model

The advent of multicore CPUs and manycore GPUs means that mainstream

processor chips are now parallel systems. Furthermore, their parallelism continues

to scale with Moore’s law. The challenge is to develop application software that

transparently scales its parallelism to leverage the increasing number of processor

cores, much as 3D graphics applications transparently scale their parallelism to

manycore GPUs with widely varying numbers of cores.

The CUDA parallel programming model is designed to overcome this challenge

while maintaining a low learning curve for programmers familiar with standard

programming languages such as C.

At its core are three key abstractions – a hierarchy of thread groups, shared

memories, and barrier synchronization – that are simply exposed to the programmer

as a minimal set of language extensions.

These abstractions provide fine-grained data parallelism and thread parallelism,

nested within coarse-grained data parallelism and task parallelism. They guide the

programmer to partition the problem into coarse sub-problems that can be solved

independently in parallel by blocks of threads, and each sub-problem into finer

pieces that can be solved cooperatively in parallel by all threads within the block.

Chapter 1. Introduction

CUDA C Programming Guide Version 4.2 5

This decomposition preserves language expressivity by allowing threads to

cooperate when solving each sub-problem, and at the same time enables automatic

scalability. Indeed, each block of threads can be scheduled on any of the available

multiprocessors within a GPU, in any order, concurrently or sequentially, so that a

compiled CUDA program can execute on any number of multiprocessors as

illustrated by Figure 1-4, and only the runtime system needs to know the physical

multiprocessor count.

This scalable programming model allows the CUDA architecture to span a wide

market range by simply scaling the number of multiprocessors and memory

partitions: from the high-performance enthusiast GeForce GPUs and professional

Quadro and Tesla computing products to a variety of inexpensive, mainstream

GeForce GPUs (see Appendix A for a list of all CUDA-enabled GPUs).

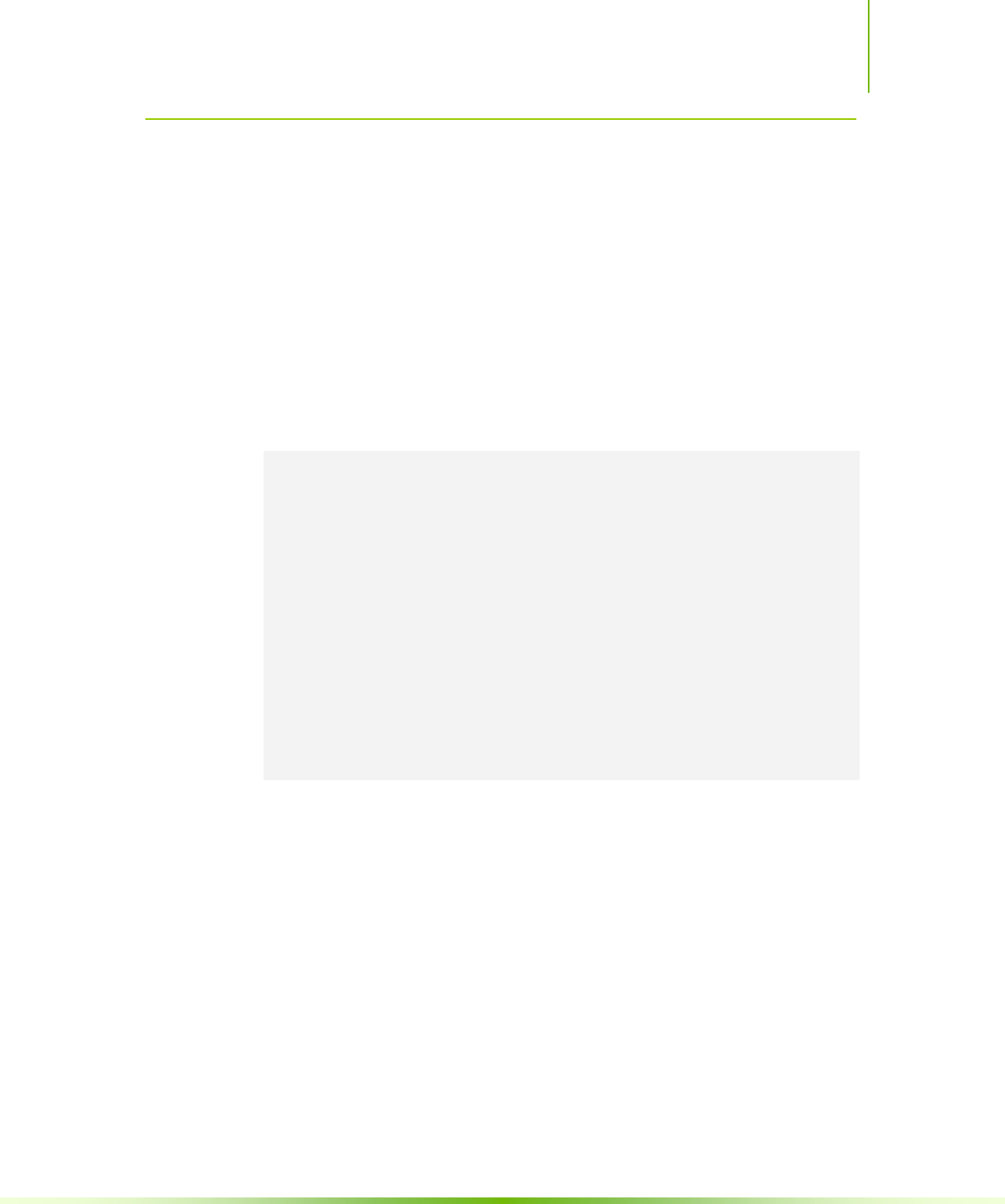

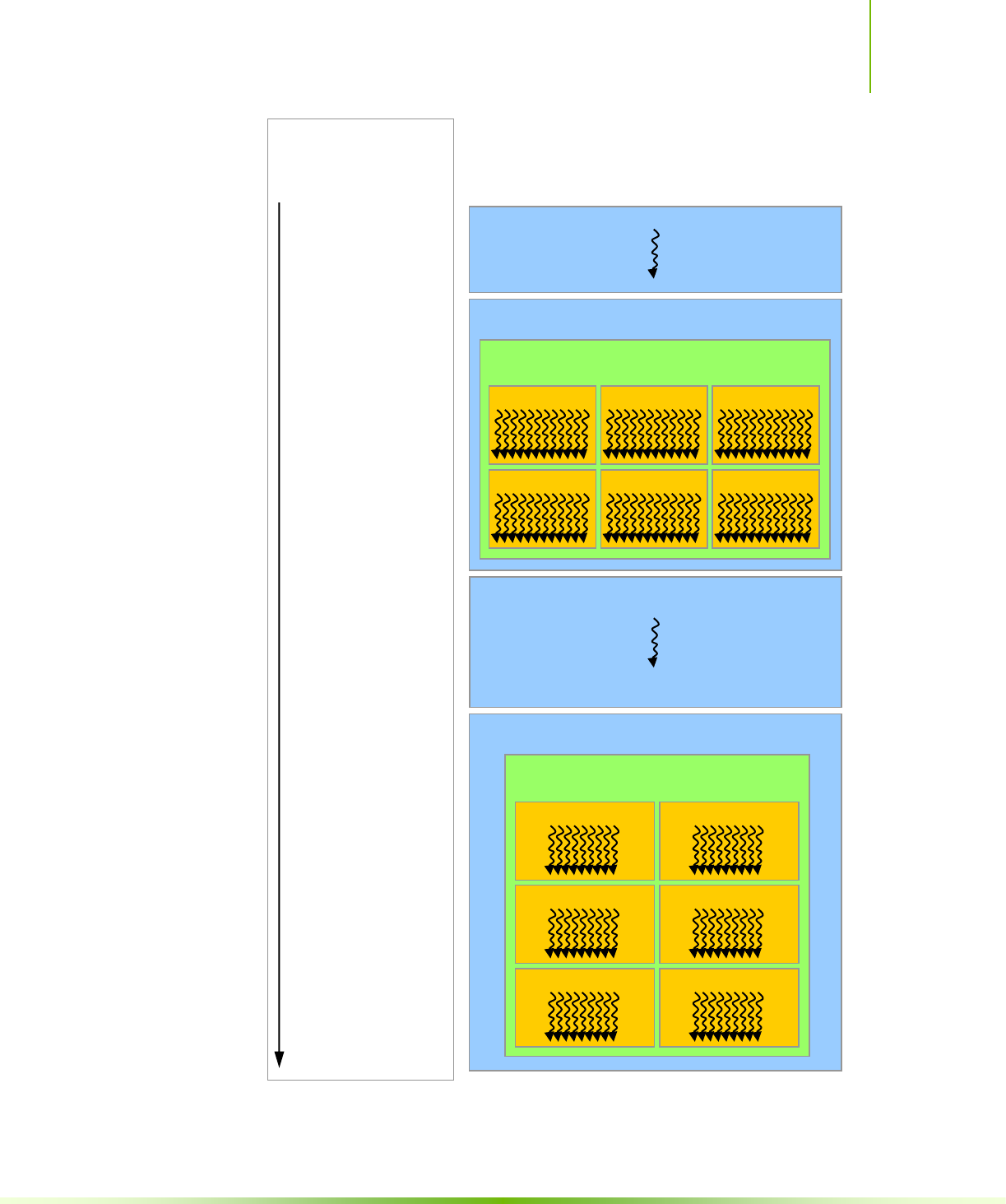

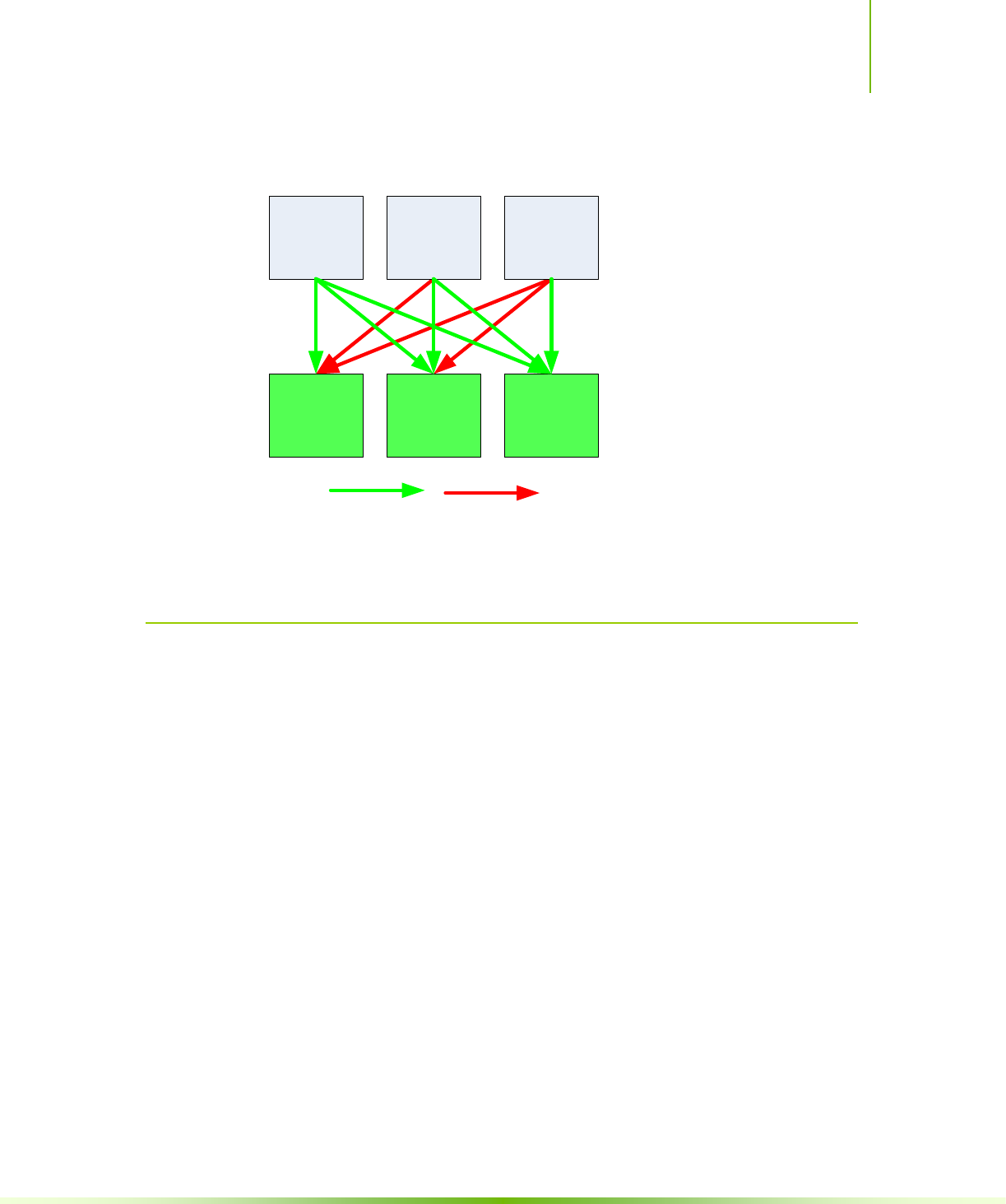

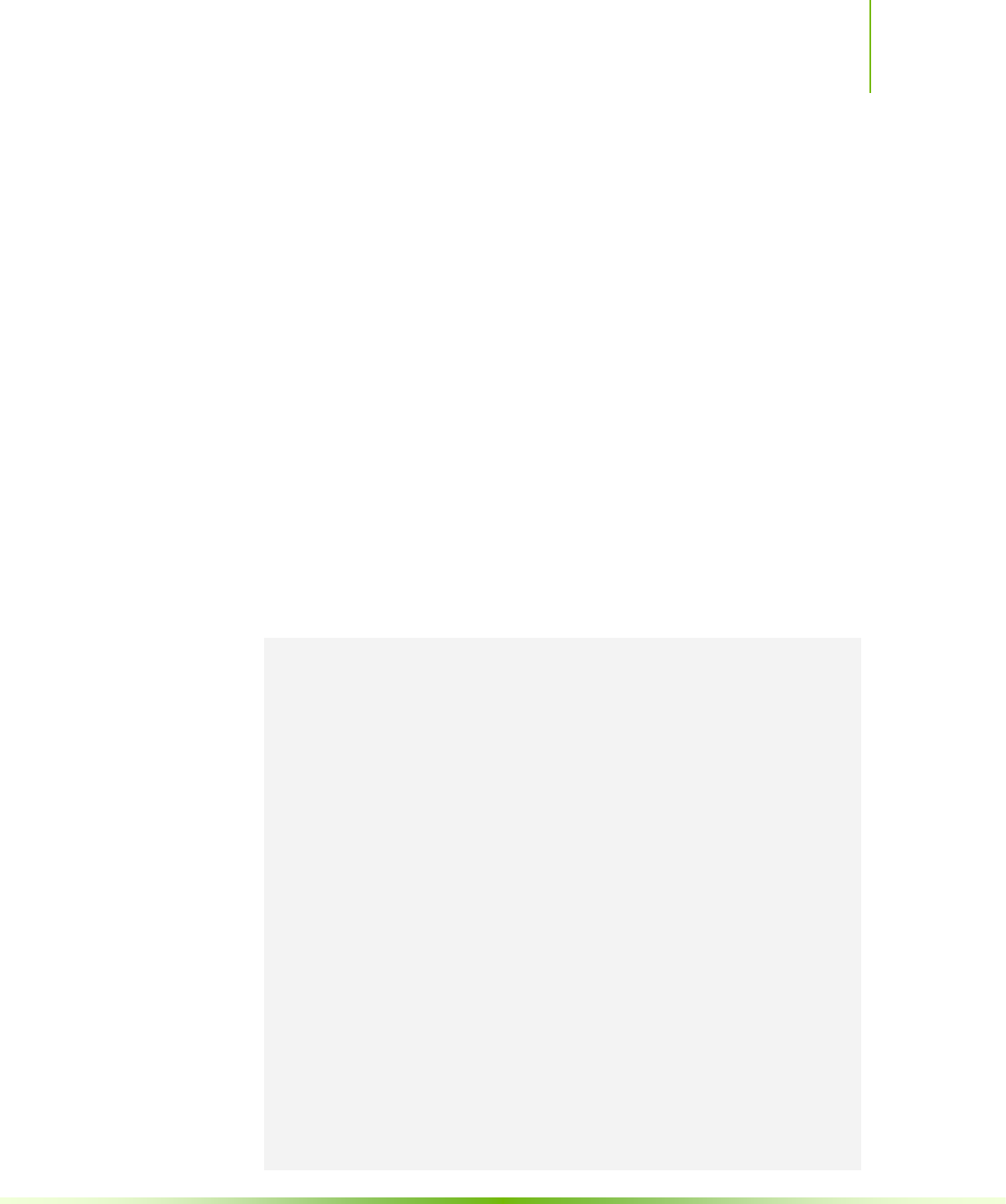

A GPU is built around an array of Streaming Multiprocessors (SMs) (see Chapter 4 for more details).

A multithreaded program is partitioned into blocks of threads that execute independently from each

other, so that a GPU with more multiprocessors will automatically execute the program in less time

than a GPU with fewer multiprocessors.

Figure 1-4. Automatic Scalability

GPU with 2 SMs

SM 1

SM 0

GPU with 4 SMs

SM 1

SM 0

SM 3

SM 2

Block 5

Block 6

Multithreaded CUDA Program

Block 0

Block 1

Block 2

Block 3

Block 4

Block 5

Block 6

Block 7

Block 1

Block 0

Block 3

Block 2

Block 5

Block 4

Block 7

Block 6

Block 0

Block 1

Block 2

Block 3

Block 4

Block 5

Block 6

Block 7

Chapter 1. Introduction

6 CUDA C Programming Guide Version 4.2

1.4 Document’s Structure

This document is organized into the following chapters:

Chapter 1 is a general introduction to CUDA.

Chapter 2 outlines the CUDA programming model.

Chapter 3 describes the programming interface.

Chapter 4 describes the hardware implementation.

Chapter 5 gives some guidance on how to achieve maximum performance.

Appendix A lists all CUDA-enabled devices.

Appendix B is a detailed description of all extensions to the C language.

Appendix C lists the mathematical functions supported in CUDA.

Appendix D lists the C++ features supported in device code.

Appendix E gives more details on texture fetching.

Appendix F gives the technical specifications of various devices, as well as more

architectural details.

Appendix G introduces the low-level driver API.

CUDA C Programming Guide Version 4.2 7

Chapter 2.

Programming Model

This chapter introduces the main concepts behind the CUDA programming model

by outlining how they are exposed in C. An extensive description of CUDA C is

given in Chapter 3.

Full code for the vector addition example used in this chapter and the next can be

found in the vectorAdd SDK code sample.

2.1 Kernels

CUDA C extends C by allowing the programmer to define C functions, called

kernels, that, when called, are executed N times in parallel by N different CUDA

threads, as opposed to only once like regular C functions.

A kernel is defined using the __global__ declaration specifier and the number of

CUDA threads that execute that kernel for a given kernel call is specified using a

new <<<…>>> execution configuration syntax (see Appendix B.18). Each thread that

executes the kernel is given a unique thread ID that is accessible within the kernel

through the built-in threadIdx variable.

As an illustration, the following sample code adds two vectors A and B of size N

and stores the result into vector C:

// Kernel definition

__global__ void VecAdd(float* A, float* B, float* C)

{

int i = threadIdx.x;

C[i] = A[i] + B[i];

}

int main()

{

...

// Kernel invocation with N threads

VecAdd<<<1, N>>>(A, B, C);

...

}

Here, each of the N threads that execute VecAdd() performs one pair-wise

addition.

Chapter 2. Programming Model

8 CUDA C Programming Guide Version 4.2

2.2 Thread Hierarchy

For convenience, threadIdx is a 3-component vector, so that threads can be

identified using a one-dimensional, two-dimensional, or three-dimensional thread

index, forming a one-dimensional, two-dimensional, or three-dimensional thread

block. This provides a natural way to invoke computation across the elements in a

domain such as a vector, matrix, or volume.

The index of a thread and its thread ID relate to each other in a straightforward

way: For a one-dimensional block, they are the same; for a two-dimensional block

of size (Dx, Dy), the thread ID of a thread of index (x, y) is (x + y Dx); for a three-

dimensional block of size (Dx, Dy, Dz), the thread ID of a thread of index (x, y, z) is

(x + y Dx + z Dx Dy).

As an example, the following code adds two matrices A and B of size NxN and

stores the result into matrix C:

// Kernel definition

__global__ void MatAdd(float A[N][N], float B[N][N],

float C[N][N])

{

int i = threadIdx.x;

int j = threadIdx.y;

C[i][j] = A[i][j] + B[i][j];

}

int main()

{

...

// Kernel invocation with one block of N * N * 1 threads

int numBlocks = 1;

dim3 threadsPerBlock(N, N);

MatAdd<<<numBlocks, threadsPerBlock>>>(A, B, C);

...

}

There is a limit to the number of threads per block, since all threads of a block are

expected to reside on the same processor core and must share the limited memory

resources of that core. On current GPUs, a thread block may contain up to 1024

threads.

However, a kernel can be executed by multiple equally-shaped thread blocks, so that

the total number of threads is equal to the number of threads per block times the

number of blocks.

Blocks are organized into a one-dimensional, two-dimensional, or three-dimensional

grid of thread blocks as illustrated by Figure 2-1. The number of thread blocks in a

grid is usually dictated by the size of the data being processed or the number of

processors in the system, which it can greatly exceed.

Chapter 2: Programming Model

CUDA C Programming Guide Version 4.2 9

Figure 2-1. Grid of Thread Blocks

The number of threads per block and the number of blocks per grid specified in the

<<<…>>> syntax can be of type int or dim3. Two-dimensional blocks or grids can

be specified as in the example above.

Each block within the grid can be identified by a one-dimensional, two-dimensional,

or three-dimensional index accessible within the kernel through the built-in

blockIdx variable. The dimension of the thread block is accessible within the

kernel through the built-in blockDim variable.

Extending the previous MatAdd() example to handle multiple blocks, the code

becomes as follows.

// Kernel definition

__global__ void MatAdd(float A[N][N], float B[N][N],

float C[N][N])

{

int i = blockIdx.x * blockDim.x + threadIdx.x;

int j = blockIdx.y * blockDim.y + threadIdx.y;

if (i < N && j < N)

C[i][j] = A[i][j] + B[i][j];

Grid

Block (1, 1)

Thread (0, 0)

Thread (1, 0)

Thread (2, 0)

Thread (3, 0)

Thread (0, 1)

Thread (1, 1)

Thread (2, 1)

Thread (3, 1)

Thread (0, 2)

Thread (1, 2)

Thread (2, 2)

Thread (3, 2)

Block (2, 1)

Block (1, 1)

Block (0, 1)

Block (2, 0)

Block (1, 0)

Block (0, 0)

Chapter 2. Programming Model

10 CUDA C Programming Guide Version 4.2

}

int main()

{

...

// Kernel invocation

dim3 threadsPerBlock(16, 16);

dim3 numBlocks(N / threadsPerBlock.x, N / threadsPerBlock.y);

MatAdd<<<numBlocks, threadsPerBlock>>>(A, B, C);

...

}

A thread block size of 16x16 (256 threads), although arbitrary in this case, is a

common choice. The grid is created with enough blocks to have one thread per

matrix element as before. For simplicity, this example assumes that the number of

threads per grid in each dimension is evenly divisible by the number of threads per

block in that dimension, although that need not be the case.

Thread blocks are required to execute independently: It must be possible to execute

them in any order, in parallel or in series. This independence requirement allows

thread blocks to be scheduled in any order across any number of cores as illustrated

by Figure 1-4, enabling programmers to write code that scales with the number of

cores.

Threads within a block can cooperate by sharing data through some shared memory

and by synchronizing their execution to coordinate memory accesses. More

precisely, one can specify synchronization points in the kernel by calling the

__syncthreads() intrinsic function; __syncthreads() acts as a barrier at

which all threads in the block must wait before any is allowed to proceed.

Section 3.2.3 gives an example of using shared memory.

For efficient cooperation, the shared memory is expected to be a low-latency

memory near each processor core (much like an L1 cache) and __syncthreads()

is expected to be lightweight.

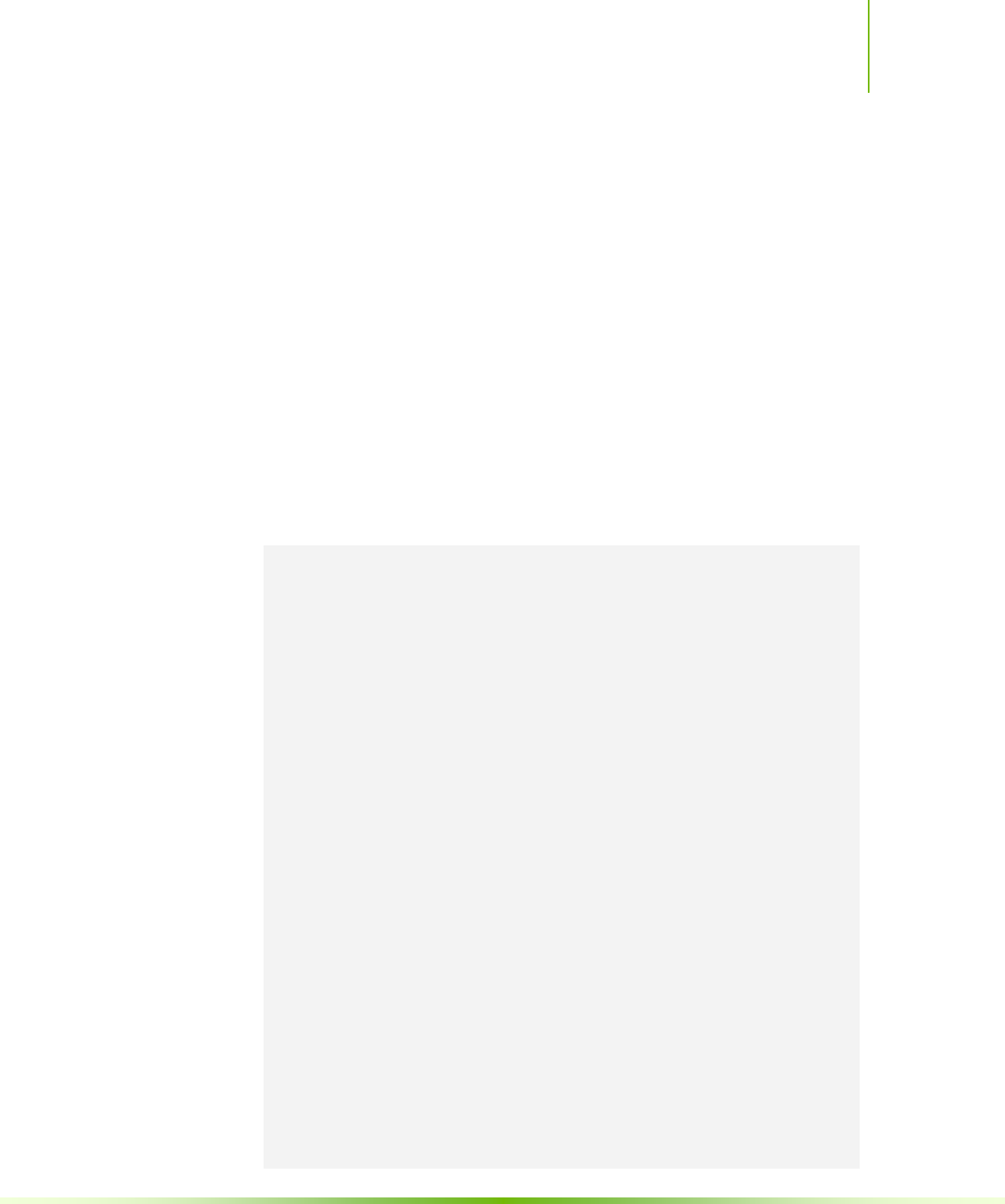

2.3 Memory Hierarchy

CUDA threads may access data from multiple memory spaces during their

execution as illustrated by Figure 2-2. Each thread has private local memory. Each

thread block has shared memory visible to all threads of the block and with the

same lifetime as the block. All threads have access to the same global memory.

There are also two additional read-only memory spaces accessible by all threads: the

constant and texture memory spaces. The global, constant, and texture memory

spaces are optimized for different memory usages (see Sections 5.3.2.1, 5.3.2.4, and

5.3.2.5). Texture memory also offers different addressing modes, as well as data

filtering, for some specific data formats (see Section 3.2.10).

The global, constant, and texture memory spaces are persistent across kernel

launches by the same application.

Chapter 2: Programming Model

CUDA C Programming Guide Version 4.2 11

Figure 2-2. Memory Hierarchy

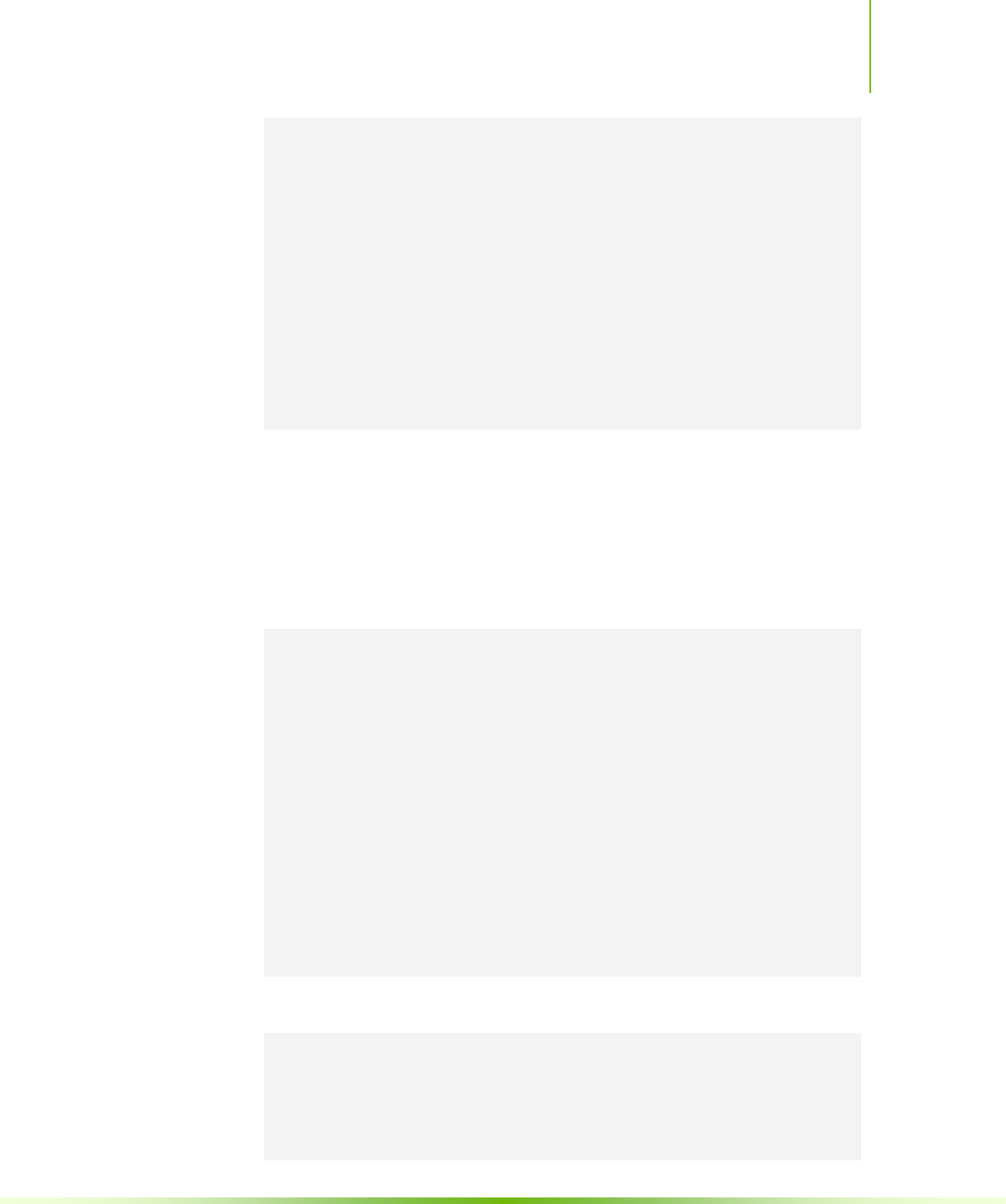

2.4 Heterogeneous Programming

As illustrated by Figure 2-3, the CUDA programming model assumes that the

CUDA threads execute on a physically separate device that operates as a coprocessor

to the host running the C program. This is the case, for example, when the kernels

execute on a GPU and the rest of the C program executes on a CPU.

Global memory

Grid 0

Block (2, 1)

Block (1, 1)

Block (0, 1)

Block (2, 0)

Block (1, 0)

Block (0, 0)

Grid 1

Block (1, 1)

Block (1, 0)

Block (1, 2)

Block (0, 1)

Block (0, 0)

Block (0, 2)

Thread Block

Per-block shared

memory

Thread

Per-thread local

memory

Chapter 2. Programming Model

12 CUDA C Programming Guide Version 4.2

The CUDA programming model also assumes that both the host and the device

maintain their own separate memory spaces in DRAM, referred to as host memory and

device memory, respectively. Therefore, a program manages the global, constant, and

texture memory spaces visible to kernels through calls to the CUDA runtime

(described in Chapter 3). This includes device memory allocation and deallocation as

well as data transfer between host and device memory.

Chapter 2: Programming Model

CUDA C Programming Guide Version 4.2 13

Serial code executes on the host while parallel code executes on the device.

Figure 2-3. Heterogeneous Programming

Device

Grid 0

Block (2, 1)

Block (1, 1)

Block (0, 1)

Block (2, 0)

Block (1, 0)

Block (0, 0)

Host

C Program

Sequential

Execution

Serial code

Parallel kernel

Kernel0<<<>>>()

Serial code

Parallel kernel

Kernel1<<<>>>()

Host

Device

Grid 1

Block (1, 1)

Block (1, 0)

Block (1, 2)

Block (0, 1)

Block (0, 0)

Block (0, 2)

Chapter 2. Programming Model

14 CUDA C Programming Guide Version 4.2

2.5 Compute Capability

The compute capability of a device is defined by a major revision number and a minor

revision number.

Devices with the same major revision number are of the same core architecture. The

major revision number is 3 for devices based on the Kepler architecture, 2 for devices

based on the Fermi architecture, and 1 for devices based on the Tesla architecture.

The minor revision number corresponds to an incremental improvement to the core

architecture, possibly including new features.

Appendix A lists of all CUDA-enabled devices along with their compute capability.

Appendix F gives the technical specifications of each compute capability.

CUDA C Programming Guide Version 4.2 15

Chapter 3.

Programming Interface

CUDA C provides a simple path for users familiar with the C programming

language to easily write programs for execution by the device.

It consists of a minimal set of extensions to the C language and a runtime library.

The core language extensions have been introduced in Chapter 2. They allow

programmers to define a kernel as a C function and use some new syntax to specify

the grid and block dimension each time the function is called. A complete

description of all extensions can be found in Appendix B. Any source file that

contains some of these extensions must be compiled with nvcc as outlined in

Section 3.1.

The runtime is introduced in Section 3.2. It provides C functions that execute on

the host to allocate and deallocate device memory, transfer data between host

memory and device memory, manage systems with multiple devices, etc. A complete

description of the runtime can be found in the CUDA reference manual.

The runtime is built on top of a lower-level C API, the CUDA driver API, which is

also accessible by the application. The driver API provides an additional level of

control by exposing lower-level concepts such as CUDA contexts –the analogue of

host processes for the device– and CUDA modules –the analogue of dynamically

loaded libraries for the device. Most applications do not use the driver API as they

do not need this additional level of control and when using the runtime, context and

module management are implicit, resulting in more concise code. The driver API is

introduced in Appendix G and fully described in the reference manual.

3.1 Compilation with NVCC

Kernels can be written using the CUDA instruction set architecture, called PTX,

which is described in the PTX reference manual. It is however usually more

effective to use a high-level programming language such as C. In both cases, kernels

must be compiled into binary code by nvcc to execute on the device.

nvcc is a compiler driver that simplifies the process of compiling C or PTX code: It

provides simple and familiar command line options and executes them by invoking

the collection of tools that implement the different compilation stages. This section

gives an overview of nvcc workflow and command options. A complete

description can be found in the nvcc user manual.

Chapter 3. Programming Interface

16 CUDA C Programming Guide Version 4.2

3.1.1 Compilation Workflow

3.1.1.1 Offline Compilation

Source files compiled with nvcc can include a mix of host code (i.e. code that

executes on the host) and device code (i.e. code that executes on the device). nvcc’s

basic workflow consists in separating device code from host code and then:

compiling the device code into an assembly form (PTX code) and/or binary

form (cubin object),

and modifying the host code by replacing the <<<…>>> syntax introduced in

Section 2.1 (and described in more details in Section B.18) by the necessary

CUDA C runtime function calls to load and launch each compiled kernel from

the PTX code and/or cubin object.

The modified host code is output either as C code that is left to be compiled using

another tool or as object code directly by letting nvcc invoke the host compiler

during the last compilation stage.

Applications can then:

Either link to the compiled host code,

Or ignore the modifed host code (if any) and use the CUDA driver API (see

Appendix G) to load and execute the PTX code or cubin object.

3.1.1.2 Just-in-Time Compilation

Any PTX code loaded by an application at runtime is compiled further to binary

code by the device driver. This is called just-in-time compilation. Just-in-time

compilation increases application load time, but allows applications to benefit from

latest compiler improvements. It is also the only way for applications to run on

devices that did not exist at the time the application was compiled, as detailed in

Section 3.1.4.

When the device driver just-in-time compiles some PTX code for some application,

it automatically caches a copy of the generated binary code in order to avoid

repeating the compilation in subsequent invocations of the application. The cache –

referred to as compute cache – is automatically invalidated when the device driver is

upgraded, so that applications can benefit from the improvements in the new just-

in-time compiler built into the device driver.

Environment variables are available to control just-in-time compilation:

Setting CUDA_CACHE_DISABLE to 1 disables caching (i.e. no binary code is

added to or retrieved from the cache).

CUDA_CACHE_MAXSIZE specifies the size of the compute cache in bytes; the

default size is 32 MB and the maximum size is 4 GB; binary codes whose size

exceeds the cache size are not cached; older binary codes are evicted from the

cache to make room for newer binary codes if needed.

CUDA_CACHE_PATH specifies the folder where the compute cache files are

stored; the default values are:

on Windows, %APPDATA%\NVIDIA\ComputeCache,

Chapter 3. Programming Interface

CUDA C Programming Guide Version 4.2 17

on MacOS,

$HOME/Library/Application\ Support/NVIDIA/ComputeCach

e,

on Linux, ~/.nv/ComputeCache.

Setting CUDA_FORCE_PTX_JIT to 1 forces the device driver to ignore any

binary code embedded in an application (see Section 3.1.4) and to just-in-time

compile embedded PTX code instead; if a kernel does not have embedded PTX

code, it will fail to load; this environment variable can be used to validate that

PTX code is embedded in an application and that its just-in-time compilation

works as expected to guarantee application forward compatibility with future

architectures.

3.1.2 Binary Compatibility

Binary code is architecture-specific. A cubin object is generated using the compiler

option –code that specifies the targeted architecture: For example, compiling with

–code=sm_13 produces binary code for devices of compute capability 1.3. Binary

compatibility is guaranteed from one minor revision to the next one, but not from

one minor revision to the previous one or across major revisions. In other words, a

cubin object generated for compute capability X.y is only guaranteed to execute on

devices of compute capability X.z where z≥y.

3.1.3 PTX Compatibility

Some PTX instructions are only supported on devices of higher compute

capabilities. For example, atomic instructions on global memory are only supported

on devices of compute capability 1.1 and above; double-precision instructions are

only supported on devices of compute capability 1.3 and above. The –arch

compiler option specifies the compute capability that is assumed when compiling C

to PTX code. So, code that contains double-precision arithmetic, for example, must

be compiled with “-arch=sm_13” (or higher compute capability), otherwise

double-precision arithmetic will get demoted to single-precision arithmetic.

PTX code produced for some specific compute capability can always be compiled to

binary code of greater or equal compute capability.

3.1.4 Application Compatibility

To execute code on devices of specific compute capability, an application must load

binary or PTX code that is compatible with this compute capability as described in

Sections 3.1.2 and 3.1.3. In particular, to be able to execute code on future

architectures with higher compute capability – for which no binary code can be

generated yet –, an application must load PTX code that will be just-in-time

compiled for these devices (see Section 3.1.1.2).

Which PTX and binary code gets embedded in a CUDA C application is controlled

by the –arch and –code compiler options or the –gencode compiler option as

detailed in the nvcc user manual. For example,

Chapter 3. Programming Interface

18 CUDA C Programming Guide Version 4.2

nvcc x.cu

–gencode arch=compute_10,code=sm_10

–gencode arch=compute_11,code=\’compute_11,sm_11\’

embeds binary code compatible with compute capability 1.0 (first –gencode

option) and PTX and binary code compatible with compute capability 1.1 (second

-gencode option).

Host code is generated to automatically select at runtime the most appropriate code

to load and execute, which, in the above example, will be:

1.0 binary code for devices with compute capability 1.0,

1.1 binary code for devices with compute capability 1.1, 1.2, 1.3,

binary code obtained by compiling 1.1 PTX code for devices with compute

capabilities 2.0 and higher.

x.cu can have an optimized code path that uses atomic operations, for example,

which are only supported in devices of compute capability 1.1 and higher. The

__CUDA_ARCH__ macro can be used to differentiate various code paths based on

compute capability. It is only defined for device code. When compiling with

“arch=compute_11” for example, __CUDA_ARCH__ is equal to 110.

Applications using the driver API must compile code to separate files and explicitly

load and execute the most appropriate file at runtime.

The nvcc user manual lists various shorthands for the –arch, –code, and

gencode compiler options. For example, “arch=sm_13” is a shorthand for

“arch=compute_13 code=compute_13,sm_13” (which is the same as

“gencode arch=compute_13,code=\’compute_13,sm_13\’”).

3.1.5 C/C++ Compatibility

The front end of the compiler processes CUDA source files according to C++

syntax rules. Full C++ is supported for the host code. However, only a subset of

C++ is fully supported for the device code as described in Appendix D. As a

consequence of the use of C++ syntax rules, void pointers (e.g., returned by

malloc()) cannot be assigned to non-void pointers without a typecast.

3.1.6 64-Bit Compatibility

The 64-bit version of nvcc compiles device code in 64-bit mode (i.e. pointers are

64-bit). Device code compiled in 64-bit mode is only supported with host code

compiled in 64-bit mode.

Similarly, the 32-bit version of nvcc compiles device code in 32-bit mode and

device code compiled in 32-bit mode is only supported with host code compiled in

32-bit mode.

The 32-bit version of nvcc can compile device code in 64-bit mode also using the

m64 compiler option.

The 64-bit version of nvcc can compile device code in 32-bit mode also using the

m32 compiler option.

Chapter 3. Programming Interface

CUDA C Programming Guide Version 4.2 19

3.2 CUDA C Runtime

The runtime is implemented in the cudart dynamic library which is typically

included in the application installation package. All its entry points are prefixed with

cuda.

As mentioned in Section 2.4, the CUDA programming model assumes a system

composed of a host and a device, each with their own separate memory.

Section 3.2.2 gives an overview of the runtime functions used to manage device

memory.

Section 3.2.3 illustrates the use of shared memory, introduced in Section 2.2, to

maximize performance.

Section 3.2.4 introduces page-locked host memory that is required to overlap kernel

execution with data transfers between host and device memory.

Section 3.2.5 describes the concepts and API used to enable asynchronous

concurrent execution at various levels in the system.

Section 3.2.6 shows how the programming model extends to a system with multiple

devices attached to the same host.

Section 3.2.8 describe how to properly check the errors generated by the runtime.

Section 3.2.9 mentions the runtime functions used to manage the CUDA C call

stack.

Section 3.2.10 presents the texture and surface memory spaces that provide another

way to access device memory; they also expose a subset of the GPU texturing

hardware.

Section 3.2.11 introduces the various functions the runtime provides to interoperate

with the two main graphics APIs, OpenGL and Direct3D.

3.2.1 Initialization

There is no explicit initialization function for the runtime; it initializes the first time

a runtime function is called (more specifically any function other than functions

from the device and version management sections of the reference manual). One

needs to keep this in mind when timing runtime function calls and when

interpreting the error code from the first call into the runtime.

During initialization, the runtime creates a CUDA context for each device in the

system (see Section G.1 for more details on CUDA contexts). This context is the

primary context for this device and it is shared among all the host threads of the

application. This all happens under the hood and the runtime does not expose the

primary context to the application.

When a host thread calls cudaDeviceReset(), this destroys the primary context

of the device the host thread currently operates on (i.e. the current device as defined

in Section 3.2.6.2). The next runtime function call made by any host thread that has

this device as current will create a new primary context for this device.

Chapter 3. Programming Interface

20 CUDA C Programming Guide Version 4.2

3.2.2 Device Memory

As mentioned in Section 2.4, the CUDA programming model assumes a system

composed of a host and a device, each with their own separate memory. Kernels

can only operate out of device memory, so the runtime provides functions to

allocate, deallocate, and copy device memory, as well as transfer data between host

memory and device memory.

Device memory can be allocated either as linear memory or as CUDA arrays.

CUDA arrays are opaque memory layouts optimized for texture fetching. They are

described in Section 3.2.10.

Linear memory exists on the device in a 32-bit address space for devices of compute

capability 1.x and 40-bit address space of devices of higher compute capability, so

separately allocated entities can reference one another via pointers, for example, in a

binary tree.

Linear memory is typically allocated using cudaMalloc() and freed using

cudaFree() and data transfer between host memory and device memory are

typically done using cudaMemcpy(). In the vector addition code sample of

Section 2.1, the vectors need to be copied from host memory to device memory:

// Device code

__global__ void VecAdd(float* A, float* B, float* C, int N)

{

int i = blockDim.x * blockIdx.x + threadIdx.x;

if (i < N)

C[i] = A[i] + B[i];

}

// Host code

int main()

{

int N = ...;

size_t size = N * sizeof(float);

// Allocate input vectors h_A and h_B in host memory

float* h_A = (float*)malloc(size);

float* h_B = (float*)malloc(size);

// Initialize input vectors

...

// Allocate vectors in device memory

float* d_A;

cudaMalloc(&d_A, size);

float* d_B;

cudaMalloc(&d_B, size);

float* d_C;

cudaMalloc(&d_C, size);

// Copy vectors from host memory to device memory

cudaMemcpy(d_A, h_A, size, cudaMemcpyHostToDevice);

cudaMemcpy(d_B, h_B, size, cudaMemcpyHostToDevice);

// Invoke kernel

Chapter 3. Programming Interface

CUDA C Programming Guide Version 4.2 21

int threadsPerBlock = 256;

int blocksPerGrid =

(N + threadsPerBlock – 1) / threadsPerBlock;

VecAdd<<<blocksPerGrid, threadsPerBlock>>>(d_A, d_B, d_C, N);

// Copy result from device memory to host memory

// h_C contains the result in host memory

cudaMemcpy(h_C, d_C, size, cudaMemcpyDeviceToHost);

// Free device memory

cudaFree(d_A);

cudaFree(d_B);

cudaFree(d_C);

// Free host memory

...

}

Linear memory can also be allocated through cudaMallocPitch() and

cudaMalloc3D(). These functions are recommended for allocations of 2D or 3D

arrays as it makes sure that the allocation is appropriately padded to meet the

alignment requirements described in Section 5.3.2.1, therefore ensuring best

performance when accessing the row addresses or performing copies between 2D

arrays and other regions of device memory (using the cudaMemcpy2D() and

cudaMemcpy3D() functions). The returned pitch (or stride) must be used to access

array elements. The following code sample allocates a width×height 2D array of

floating-point values and shows how to loop over the array elements in device code:

// Host code

int width = 64, height = 64;

float* devPtr;

size_t pitch;

cudaMallocPitch(&devPtr, &pitch,

width * sizeof(float), height);

MyKernel<<<100, 512>>>(devPtr, pitch, width, height);

// Device code

__global__ void MyKernel(float* devPtr,

size_t pitch, int width, int height)

{

for (int r = 0; r < height; ++r) {

float* row = (float*)((char*)devPtr + r * pitch);

for (int c = 0; c < width; ++c) {

float element = row[c];

}

}

}

The following code sample allocates a width×height×depth 3D array of

floating-point values and shows how to loop over the array elements in device code:

// Host code

int width = 64, height = 64, depth = 64;

cudaExtent extent = make_cudaExtent(width * sizeof(float),

height, depth);

cudaPitchedPtr devPitchedPtr;

cudaMalloc3D(&devPitchedPtr, extent);

MyKernel<<<100, 512>>>(devPitchedPtr, width, height, depth);

Chapter 3. Programming Interface

22 CUDA C Programming Guide Version 4.2

// Device code

__global__ void MyKernel(cudaPitchedPtr devPitchedPtr,

int width, int height, int depth)

{

char* devPtr = devPitchedPtr.ptr;

size_t pitch = devPitchedPtr.pitch;

size_t slicePitch = pitch * height;

for (int z = 0; z < depth; ++z) {

char* slice = devPtr + z * slicePitch;

for (int y = 0; y < height; ++y) {

float* row = (float*)(slice + y * pitch);

for (int x = 0; x < width; ++x) {

float element = row[x];

}

}

}

}

The reference manual lists all the various functions used to copy memory between

linear memory allocated with cudaMalloc(), linear memory allocated with

cudaMallocPitch() or cudaMalloc3D(), CUDA arrays, and memory

allocated for variables declared in global or constant memory space.

The following code sample illustrates various ways of accessing global variables via

the runtime API:

__constant__ float constData[256];

float data[256];

cudaMemcpyToSymbol(constData, data, sizeof(data));

cudaMemcpyFromSymbol(data, constData, sizeof(data));

__device__ float devData;

float value = 3.14f;

cudaMemcpyToSymbol(devData, &value, sizeof(float));

__device__ float* devPointer;

float* ptr;

cudaMalloc(&ptr, 256 * sizeof(float));

cudaMemcpyToSymbol(devPointer, &ptr, sizeof(ptr));

cudaGetSymbolAddress() is used to retrieve the address pointing to the

memory allocated for a variable declared in global memory space. The size of the

allocated memory is obtained through cudaGetSymbolSize().

3.2.3 Shared Memory

As detailed in Section B.2 shared memory is allocated using the __shared__

qualifier.

Shared memory is expected to be much faster than global memory as mentioned in

Section 2.2 and detailed in Section 5.3.2.3. Any opportunity to replace global

memory accesses by shared memory accesses should therefore be exploited as

illustrated by the following matrix multiplication example.

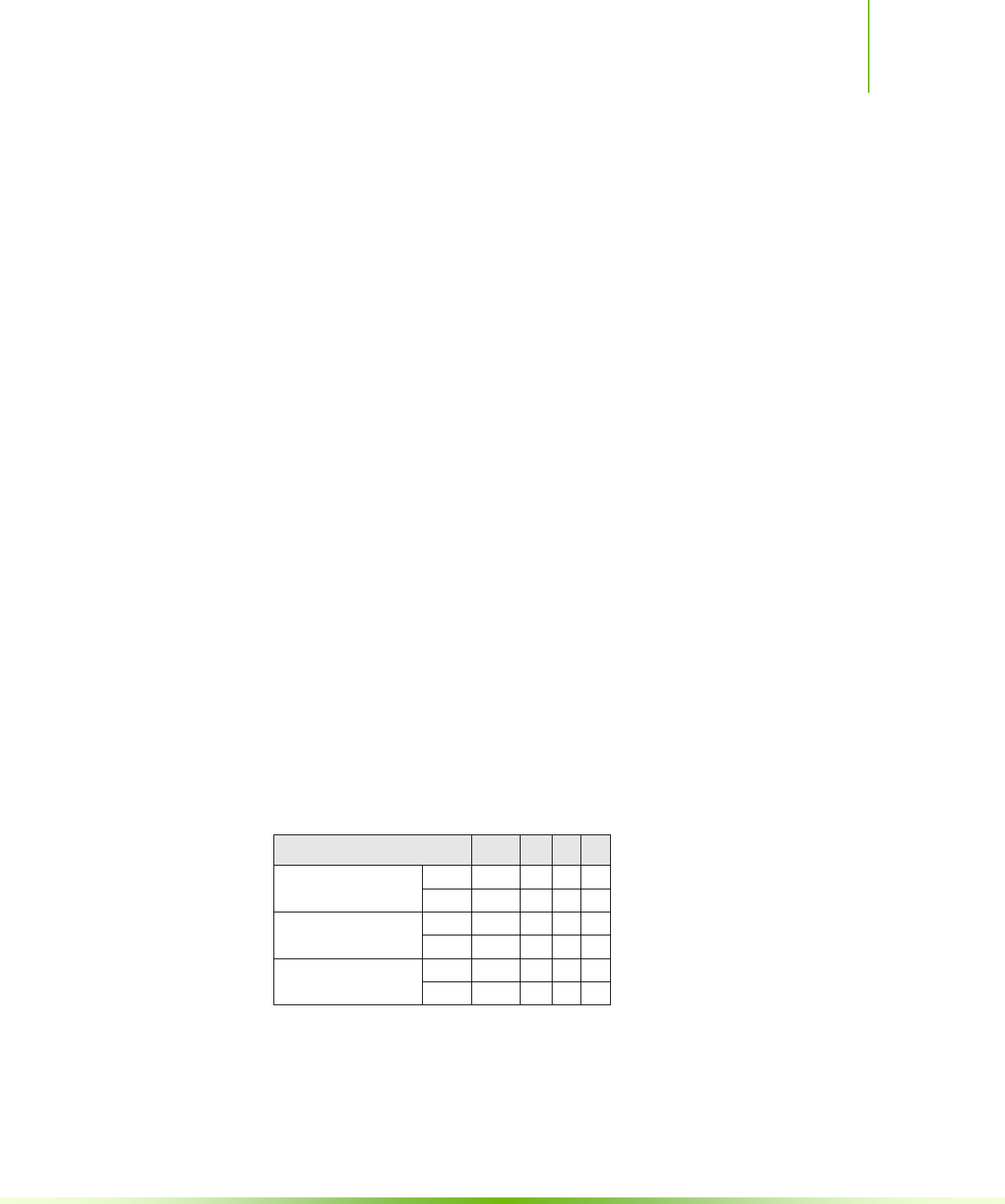

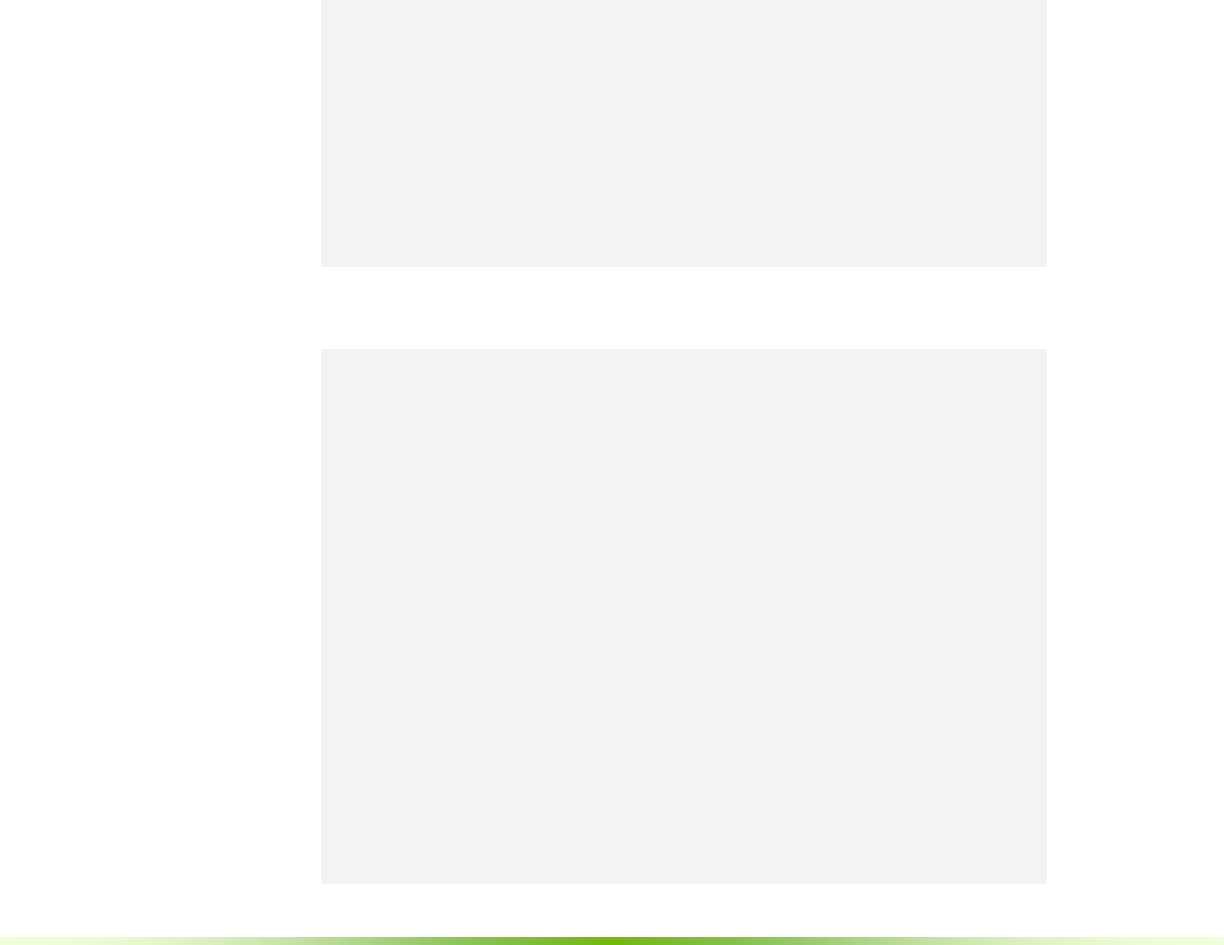

The following code sample is a straightforward implementation of matrix

multiplication that does not take advantage of shared memory. Each thread reads

Chapter 3. Programming Interface

CUDA C Programming Guide Version 4.2 23

one row of A and one column of B and computes the corresponding element of C

as illustrated in Figure 3-1. A is therefore read B.width times from global memory

and B is read A.height times.

// Matrices are stored in row-major order:

// M(row, col) = *(M.elements + row * M.width + col)

typedef struct {

int width;

int height;

float* elements;

} Matrix;

// Thread block size

#define BLOCK_SIZE 16

// Forward declaration of the matrix multiplication kernel

__global__ void MatMulKernel(const Matrix, const Matrix, Matrix);

// Matrix multiplication - Host code

// Matrix dimensions are assumed to be multiples of BLOCK_SIZE

void MatMul(const Matrix A, const Matrix B, Matrix C)

{

// Load A and B to device memory

Matrix d_A;

d_A.width = A.width; d_A.height = A.height;

size_t size = A.width * A.height * sizeof(float);

cudaMalloc(&d_A.elements, size);

cudaMemcpy(d_A.elements, A.elements, size,

cudaMemcpyHostToDevice);

Matrix d_B;

d_B.width = B.width; d_B.height = B.height;

size = B.width * B.height * sizeof(float);

cudaMalloc(&d_B.elements, size);

cudaMemcpy(d_B.elements, B.elements, size,

cudaMemcpyHostToDevice);

// Allocate C in device memory

Matrix d_C;

d_C.width = C.width; d_C.height = C.height;

size = C.width * C.height * sizeof(float);

cudaMalloc(&d_C.elements, size);

// Invoke kernel

dim3 dimBlock(BLOCK_SIZE, BLOCK_SIZE);

dim3 dimGrid(B.width / dimBlock.x, A.height / dimBlock.y);

MatMulKernel<<<dimGrid, dimBlock>>>(d_A, d_B, d_C);

// Read C from device memory

cudaMemcpy(C.elements, Cd.elements, size,

cudaMemcpyDeviceToHost);

// Free device memory

cudaFree(d_A.elements);

cudaFree(d_B.elements);

cudaFree(d_C.elements);

}

Chapter 3. Programming Interface

24 CUDA C Programming Guide Version 4.2

// Matrix multiplication kernel called by MatMul()

__global__ void MatMulKernel(Matrix A, Matrix B, Matrix C)

{

// Each thread computes one element of C

// by accumulating results into Cvalue

float Cvalue = 0;

int row = blockIdx.y * blockDim.y + threadIdx.y;

int col = blockIdx.x * blockDim.x + threadIdx.x;

for (int e = 0; e < A.width; ++e)

Cvalue += A.elements[row * A.width + e]

* B.elements[e * B.width + col];

C.elements[row * C.width + col] = Cvalue;

}

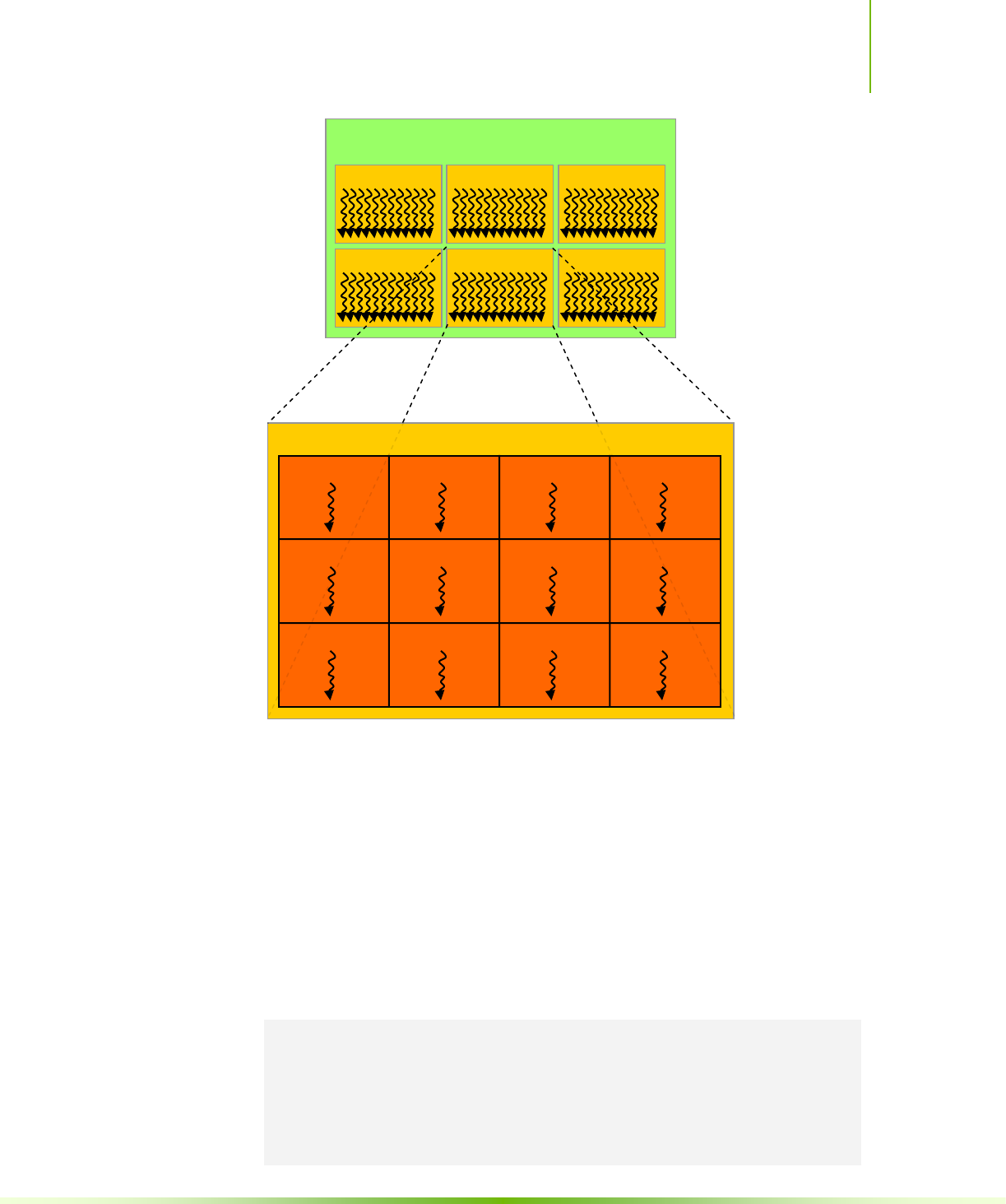

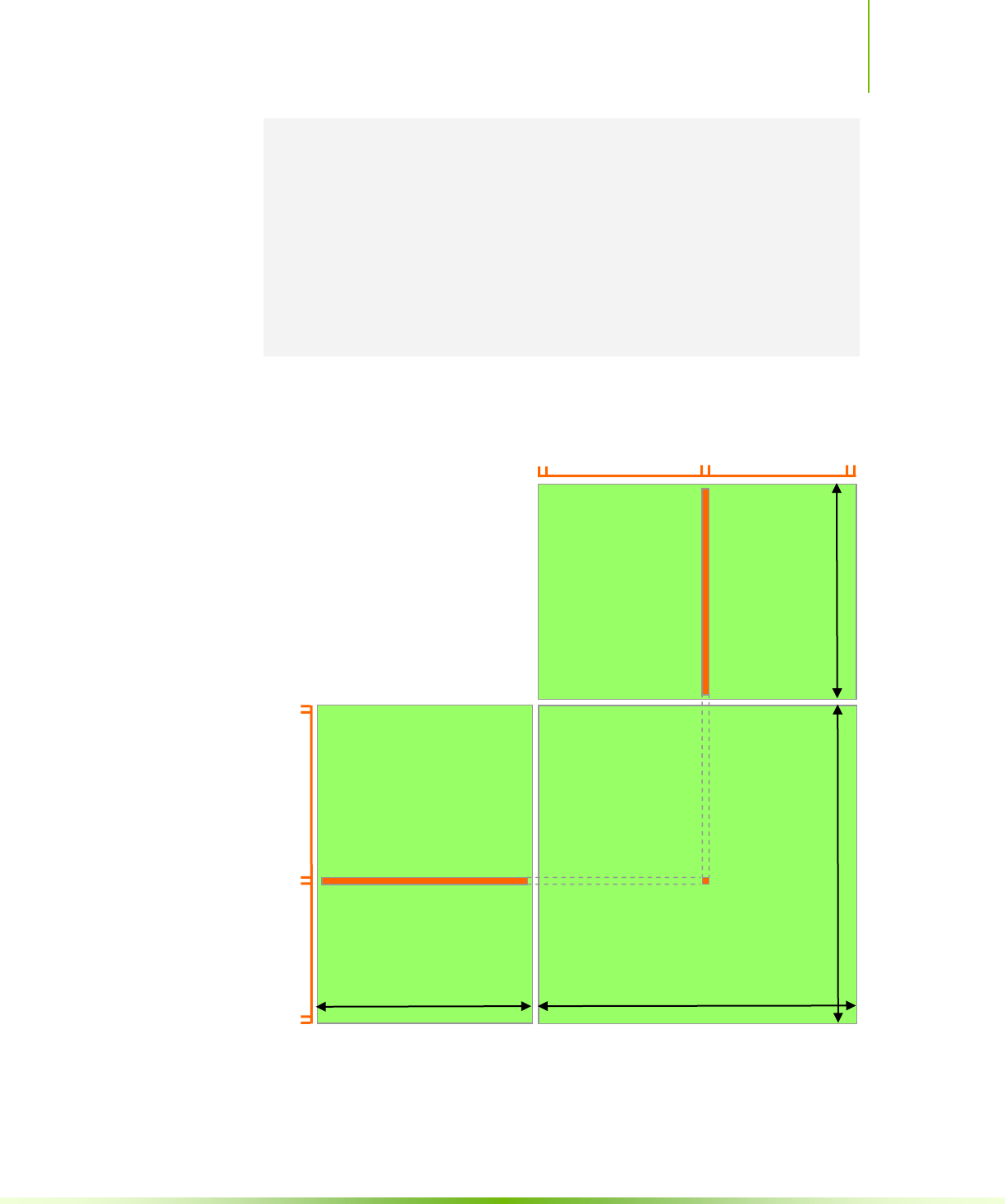

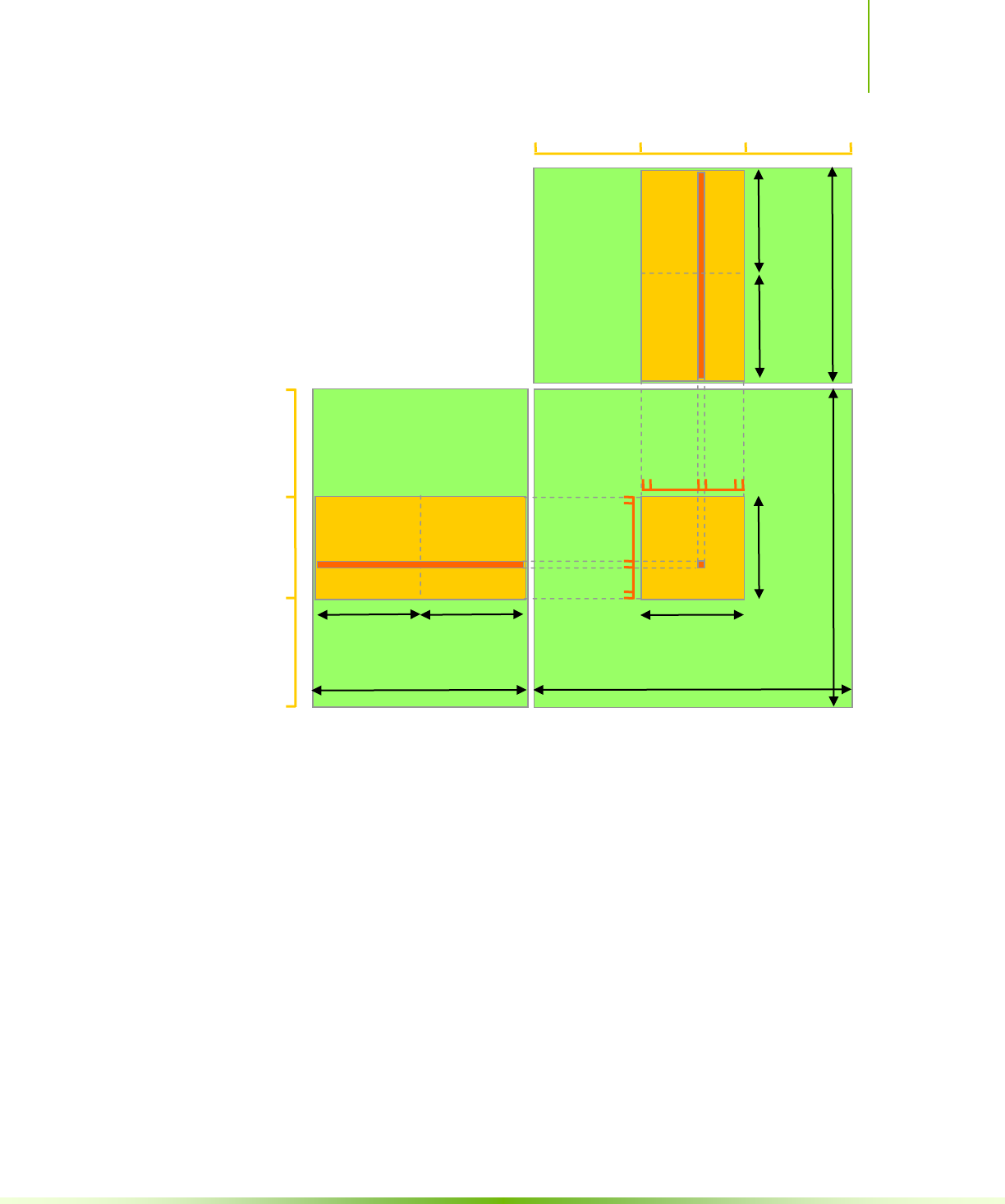

Figure 3-1. Matrix Multiplication without Shared Memory

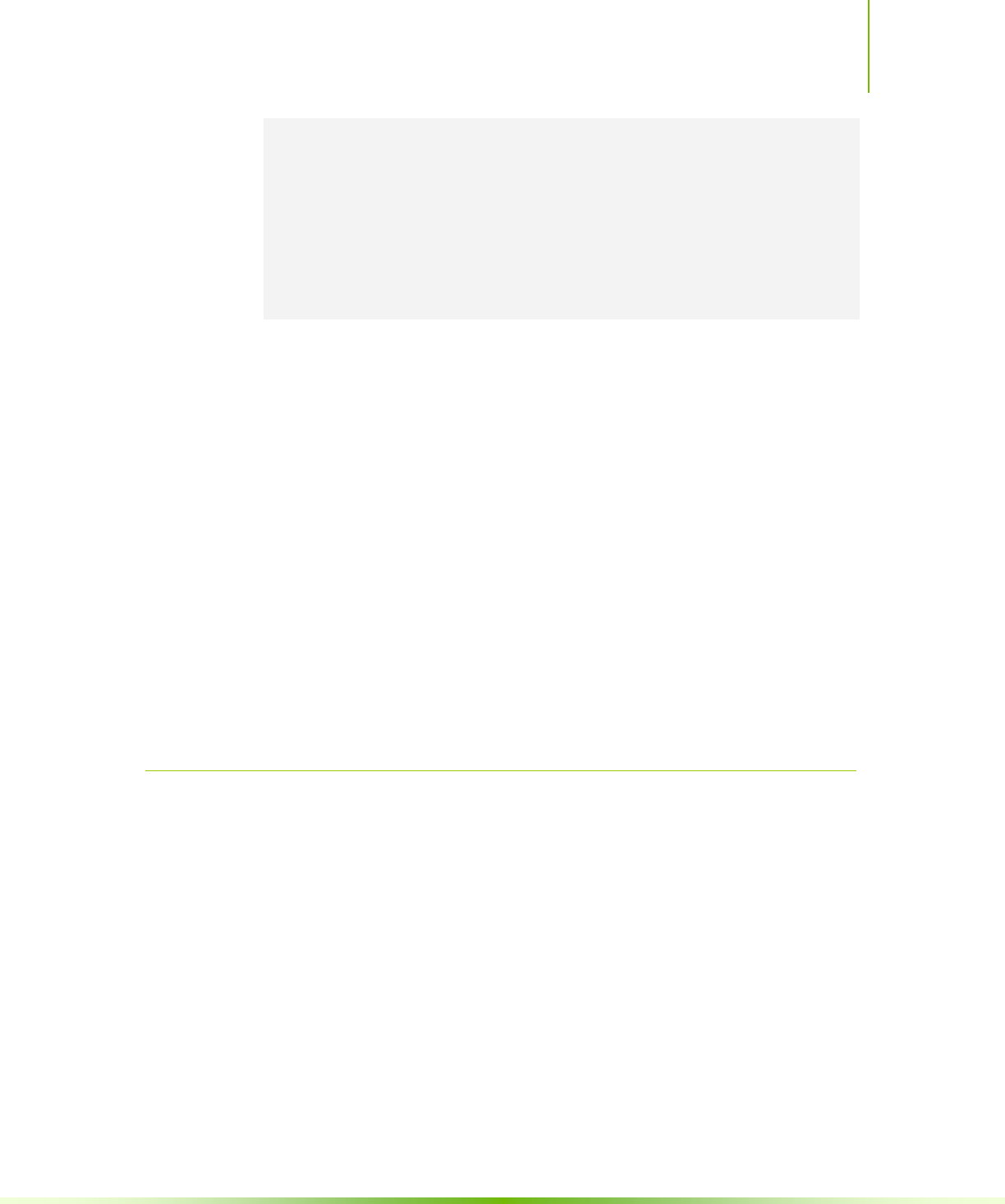

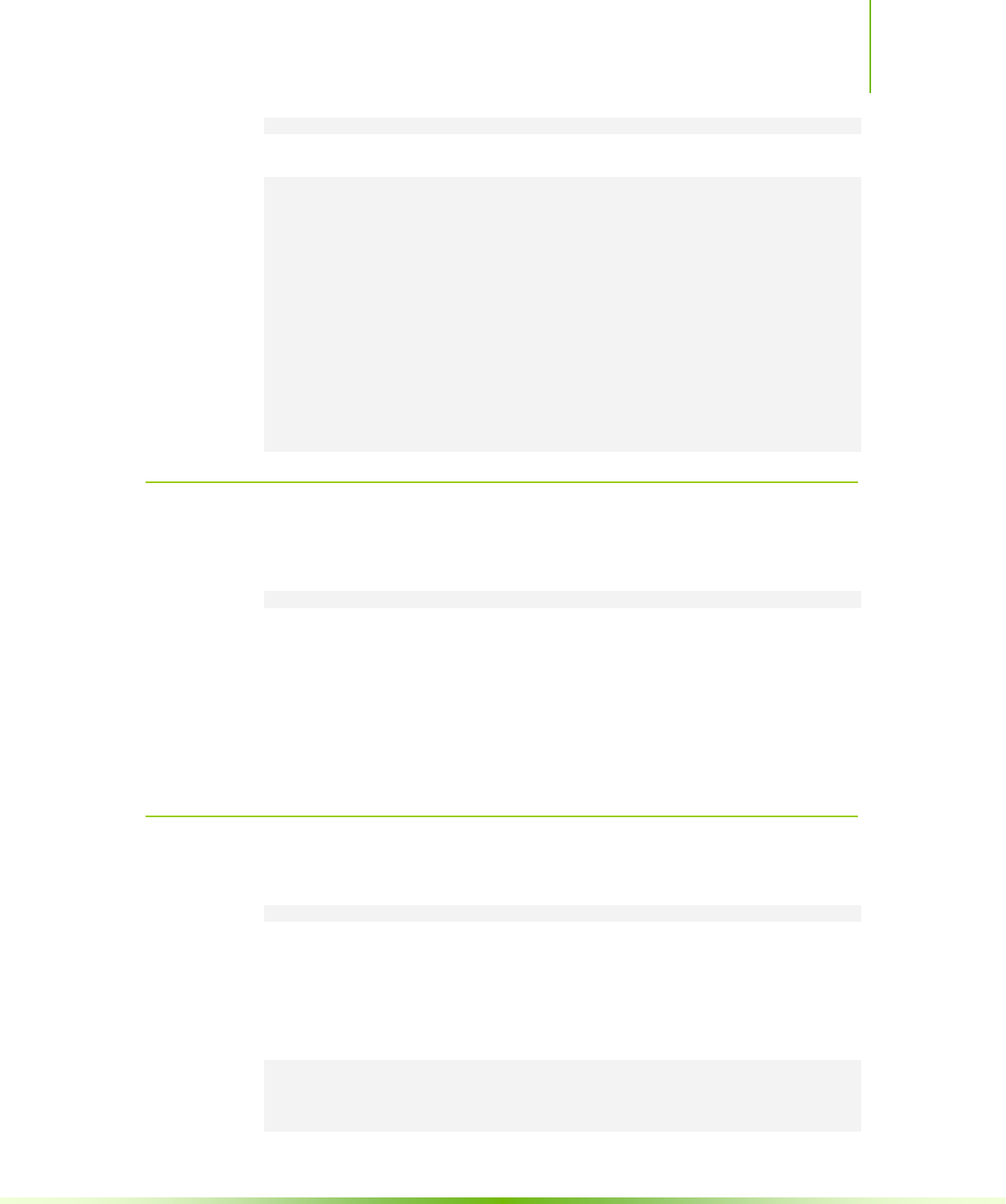

The following code sample is an implementation of matrix multiplication that does

take advantage of shared memory. In this implementation, each thread block is

responsible for computing one square sub-matrix Csub of C and each thread within

A

B

C

B.width

A.width

0

col

A.height

B.height

B.width-1

row

0

A.height-1

Chapter 3. Programming Interface

CUDA C Programming Guide Version 4.2 25

the block is responsible for computing one element of Csub. As illustrated in Figure

3-2, Csub is equal to the product of two rectangular matrices: the sub-matrix of A of

dimension (A.width, block_size) that has the same row indices as Csub, and the sub-

matrix of B of dimension (block_size, A.width) that has the same column indices as

Csub. In order to fit into the device’s resources, these two rectangular matrices are

divided into as many square matrices of dimension block_size as necessary and Csub is

computed as the sum of the products of these square matrices. Each of these

products is performed by first loading the two corresponding square matrices from

global memory to shared memory with one thread loading one element of each

matrix, and then by having each thread compute one element of the product. Each

thread accumulates the result of each of these products into a register and once

done writes the result to global memory.

By blocking the computation this way, we take advantage of fast shared memory

and save a lot of global memory bandwidth since A is only read (B.width / block_size)

times from global memory and B is read (A.height / block_size) times.

The Matrix type from the previous code sample is augmented with a stride field, so

that sub-matrices can be efficiently represented with the same type. __device__

functions (see Section B.1.1) are used to get and set elements and build any sub-

matrix from a matrix.

// Matrices are stored in row-major order:

// M(row, col) = *(M.elements + row * M.stride + col)

typedef struct {

int width;

int height;

int stride;

float* elements;

} Matrix;

// Get a matrix element

__device__ float GetElement(const Matrix A, int row, int col)

{

return A.elements[row * A.stride + col];

}

// Set a matrix element

__device__ void SetElement(Matrix A, int row, int col,

float value)

{

A.elements[row * A.stride + col] = value;

}

// Get the BLOCK_SIZExBLOCK_SIZE sub-matrix Asub of A that is

// located col sub-matrices to the right and row sub-matrices down

// from the upper-left corner of A

__device__ Matrix GetSubMatrix(Matrix A, int row, int col)

{

Matrix Asub;

Asub.width = BLOCK_SIZE;

Asub.height = BLOCK_SIZE;

Asub.stride = A.stride;

Asub.elements = &A.elements[A.stride * BLOCK_SIZE * row

+ BLOCK_SIZE * col];

return Asub;

Chapter 3. Programming Interface

26 CUDA C Programming Guide Version 4.2

}

// Thread block size

#define BLOCK_SIZE 16

// Forward declaration of the matrix multiplication kernel

__global__ void MatMulKernel(const Matrix, const Matrix, Matrix);

// Matrix multiplication - Host code

// Matrix dimensions are assumed to be multiples of BLOCK_SIZE

void MatMul(const Matrix A, const Matrix B, Matrix C)

{

// Load A and B to device memory

Matrix d_A;

d_A.width = d_A.stride = A.width; d_A.height = A.height;

size_t size = A.width * A.height * sizeof(float);

cudaMalloc(&d_A.elements, size);

cudaMemcpy(d_A.elements, A.elements, size,

cudaMemcpyHostToDevice);

Matrix d_B;

d_B.width = d_B.stride = B.width; d_B.height = B.height;

size = B.width * B.height * sizeof(float);

cudaMalloc(&d_B.elements, size);

cudaMemcpy(d_B.elements, B.elements, size,

cudaMemcpyHostToDevice);

// Allocate C in device memory

Matrix d_C;

d_C.width = d_C.stride = C.width; d_C.height = C.height;

size = C.width * C.height * sizeof(float);

cudaMalloc(&d_C.elements, size);

// Invoke kernel

dim3 dimBlock(BLOCK_SIZE, BLOCK_SIZE);

dim3 dimGrid(B.width / dimBlock.x, A.height / dimBlock.y);

MatMulKernel<<<dimGrid, dimBlock>>>(d_A, d_B, d_C);

// Read C from device memory

cudaMemcpy(C.elements, d_C.elements, size,

cudaMemcpyDeviceToHost);

// Free device memory

cudaFree(d_A.elements);

cudaFree(d_B.elements);

cudaFree(d_C.elements);

}

// Matrix multiplication kernel called by MatMul()

__global__ void MatMulKernel(Matrix A, Matrix B, Matrix C)

{

// Block row and column

int blockRow = blockIdx.y;

int blockCol = blockIdx.x;

// Each thread block computes one sub-matrix Csub of C

Matrix Csub = GetSubMatrix(C, blockRow, blockCol);

Chapter 3. Programming Interface

CUDA C Programming Guide Version 4.2 27

// Each thread computes one element of Csub

// by accumulating results into Cvalue

float Cvalue = 0;

// Thread row and column within Csub

int row = threadIdx.y;

int col = threadIdx.x;

// Loop over all the sub-matrices of A and B that are

// required to compute Csub

// Multiply each pair of sub-matrices together

// and accumulate the results

for (int m = 0; m < (A.width / BLOCK_SIZE); ++m) {

// Get sub-matrix Asub of A

Matrix Asub = GetSubMatrix(A, blockRow, m);

// Get sub-matrix Bsub of B

Matrix Bsub = GetSubMatrix(B, m, blockCol);

// Shared memory used to store Asub and Bsub respectively

__shared__ float As[BLOCK_SIZE][BLOCK_SIZE];

__shared__ float Bs[BLOCK_SIZE][BLOCK_SIZE];

// Load Asub and Bsub from device memory to shared memory

// Each thread loads one element of each sub-matrix

As[row][col] = GetElement(Asub, row, col);

Bs[row][col] = GetElement(Bsub, row, col);

// Synchronize to make sure the sub-matrices are loaded

// before starting the computation

__syncthreads();

// Multiply Asub and Bsub together

for (int e = 0; e < BLOCK_SIZE; ++e)

Cvalue += As[row][e] * Bs[e][col];

// Synchronize to make sure that the preceding

// computation is done before loading two new

// sub-matrices of A and B in the next iteration

__syncthreads();

}

// Write Csub to device memory

// Each thread writes one element

SetElement(Csub, row, col, Cvalue);

}

Chapter 3. Programming Interface

28 CUDA C Programming Guide Version 4.2

Figure 3-2. Matrix Multiplication with Shared Memory

3.2.4 Page-Locked Host Memory

The runtime provides functions to allow the use of page-locked (also known as pinned)

host memory (as opposed to regular pageable host memory allocated by

malloc()):

cudaHostAlloc() and cudaFreeHost() allocate and free page-locked

host memory;

cudaHostRegister() page-locks a range of memory allocated by