Manual

manual

manual

manual

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 310 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Introduction

- ec.Evolve and Utility Classes

- ec.EvolutionState and the ECJ Evolutionary Process

- Basic Evolutionary Processes

- Representations

- Vector and List Representations (The ec.vector Package)

- Genetic Programming (The ec.gp Package)

- GPNodes, GPTrees, and GPIndividuals

- Basic Setup

- Defining the Representation, Problem, and Statistics

- Initialization

- Breeding

- A Complete Example

- GPNodes in Depth

- GPTrees and GPIndividuals in Depth

- Ephemeral Random Constants

- Automatically Defined Functions and Macros

- Strongly Typed Genetic Programming

- Parsimony Pressure (The ec.parsimony Package)

- Grammatical Evolution (The ec.gp.ge Package)

- Push (The ec.gp.push Package)

- NEAT (The ec.neat Package)

- Rulesets and Collections (The ec.rule Package)

- Parallel Processes

- Additional Evolutionary Algorithms

- Coevolution (The ec.coevolve Package)

- Spatially Embedded Evolutionary Algorithms (The ec.spatial Package)

- Particle Swarm Optimization (The ec.pso Package)

- Differential Evolution (The ec.de Package)

- Multiobjective Optimization (The ec.multiobjective Package)

- Estimation of Distribution Algorithms

- Meta-Evolutionary Algorithms

- Resets (The ec.evolve Package)

The ECJ Owner’s Manual

A User Manual for the ECJ Evolutionary Computation Library

Sean Luke

Department of Computer Science

George Mason University

Manual Version 26

July 5, 2018

Where to Obtain ECJ

http://cs.gmu.edu/∼eclab/projects/ecj/

Copyright 2010–2017 by Sean Luke.

Thanks to Carlotta Domeniconi.

Get the latest version of this document or suggest improvements here:

http://cs.gmu.edu/∼eclab/projects/ecj/

This document is licensed

under the

Creative Commons Attribution-No Derivative Works 3.0 United

States License,

except for those portions of the work licensed differently as described in the next section. To view a copy

of this license, visit http://creativecommons.org/licenses/by-nd/3.0/us/ or send a letter to Creative Commons, 171

Second Street, Suite 300, San Francisco, California, 94105, USA. A quick license summary:

• You are free to redistribute this document.

•You may not modify, transform, translate, or build upon the document except for personal use.

• You must maintain the author’s attribution with the document at all times.

• You may not use the attribution to imply that the author endorses you or your document use.

This summary is just informational: if there is any conflict in interpretation between the summary and the actual license,

the actual license always takes precedence.

This document is was produced

in part through funding from grants 0916870 and 1317813 from the

National Science Foundation.

0

Contents

1 Introduction 7

1.1 AboutECJ ................................................ 7

1.2 Overview................................................. 9

1.3 Unpacking ECJ and Using the Tutorials . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

1.3.1 The ec Directory, the CLASSPATH, and jar files . . . . . . . . . . . . . . . . . . . . . . . 15

1.3.1.1 The ec/display Directory:ECJ’sGUI........................ 15

1.3.1.2 The ec/app Directory: Demo Applications . . . . . . . . . . . . . . . . . . . . 15

1.3.2 The docs Directory ....................................... 15

1.3.2.1 Tutorials........................................ 16

2ec.Evolve and Utility Classes 17

2.1 TheParameterDatabase ........................................ 18

2.1.1 Inheritance............................................ 19

2.1.2 KindsofParameters ...................................... 20

2.1.3 Namespace Hierarchies and Parameter Bases . . . . . . . . . . . . . . . . . . . . . . . . 22

2.1.4 Parameter Files in Jar Files . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

2.1.5 AccessingParameters ..................................... 25

2.1.6 ParameterMacros ....................................... 27

2.1.6.1 TheAliasMacro ................................... 27

2.1.7 Debugging Your Parameters . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

2.1.8 Building a Parameter Database from Scratch . . . . . . . . . . . . . . . . . . . . . . . . 30

2.2 Output .................................................. 32

2.2.1 Creating and Writing to Logs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 32

2.2.2 QuietingtheProgram ..................................... 34

2.2.3 The ec.util.Code Class...................................... 34

2.2.3.1 Decoding the Hard Way . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 35

2.2.3.2 Decoding the Easy Way . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 36

2.3 Checkpointing.............................................. 37

2.3.1 Implementing Checkpointable Code . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 39

2.4 Threads and Random Number Generation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 40

2.4.1 RandomNumbers ....................................... 40

2.4.2 Selecting Randomly from Distributions . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

2.4.3 Thread-LocalStorage...................................... 45

2.4.4 MultithreadingSupport .................................... 45

2.5 Jobs.................................................... 46

2.6 The ec.Evolve Top-level......................................... 47

2.7 Integrating ECJ with other Applications or Libraries . . . . . . . . . . . . . . . . . . . . . . . . 49

2.7.1 ControlbyECJ ......................................... 49

2.7.2 Control by another Application or Library . . . . . . . . . . . . . . . . . . . . . . . . . 53

1

3ec.EvolutionState and the ECJ Evolutionary Process 55

3.1 CommonPatterns............................................ 57

3.1.1 Setup............................................... 57

3.1.2 SingletonsandCliques..................................... 57

3.1.3 Prototypes............................................ 57

3.1.4 TheFlyweightPattern ..................................... 58

3.1.5 Groups.............................................. 58

3.2 Populations, Subpopulations, Species, Individuals, and Fitnesses . . . . . . . . . . . . . . . . 59

3.2.1 Making Large Numbers of Subpopulations . . . . . . . . . . . . . . . . . . . . . . . . . 61

3.2.2 How Species Make Individuals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

3.2.3 Reading and Writing Populations and Subpopulations . . . . . . . . . . . . . . . . . . 62

3.2.4 AboutIndividuals ....................................... 64

3.2.4.1 Implementing an Individual . . . . . . . . . . . . . . . . . . . . . . . . . . . . 64

3.2.5 AboutFitnesses......................................... 66

3.3 InitializersandFinishers........................................ 68

3.3.1 Population Files and Subpopulation Files . . . . . . . . . . . . . . . . . . . . . . . . . . 70

3.4 EvaluatorsandProblems........................................ 71

3.4.1 Problems............................................. 72

3.4.2 ImplementingaProblem ................................... 73

3.5 Breeders ................................................. 75

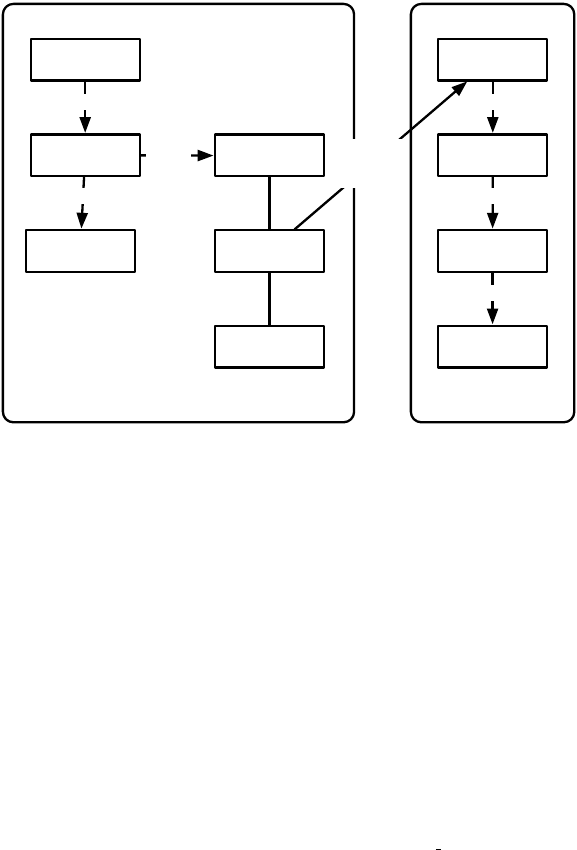

3.5.1 Breeding Pipelines and BreedingSources . . . . . . . . . . . . . . . . . . . . . . . . . . 78

3.5.1.1 AuxiliaryData.................................... 79

3.5.2 SelectionMethods........................................ 79

3.5.2.1 Implementing a Simple SelectionMethod . . . . . . . . . . . . . . . . . . . . . 80

3.5.2.2 StandardClasses................................... 81

3.5.3 BreedingPipelines ....................................... 84

3.5.3.1 Implementing a Simple BreedingPipeline . . . . . . . . . . . . . . . . . . . . 86

3.5.3.2 Standard Utility Pipelines . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 87

3.5.4 SettingupaPipeline...................................... 91

3.5.4.1 A Genetic Algorithm Pipeline . . . . . . . . . . . . . . . . . . . . . . . . . . . 91

3.5.4.2 A Genetic Programming Pipeline . . . . . . . . . . . . . . . . . . . . . . . . . 92

3.6 Exchangers................................................ 93

3.7 Statistics ................................................. 93

3.7.1 Creating a Statistics Chain . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 96

3.7.2 TabularStatistics ........................................ 96

3.7.3 QuietingtheStatistics ..................................... 99

3.7.4 Implementing a Statistics Object . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 99

3.8 Debugging an Evolutionary Process . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 101

4 Basic Evolutionary Processes 107

4.1 GenerationalEvolution......................................... 107

4.1.1 The Genetic Algorithm (The ec.simple Package)....................... 109

4.1.2 Evolution Strategies (The ec.es Package)........................... 111

4.2 Steady-State Evolution (The ec.steadystate Package) ........................ 115

4.2.1 SteadyStateStatistics ..................................... 118

4.2.2 Producing More than One Individual at a Time . . . . . . . . . . . . . . . . . . . . . . 118

4.3 Single-State Methods (The ec.singlestate Package).......................... 120

4.3.1 Simple Hill-Climbing and (1+1) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 120

4.3.2 Steepest Ascent Hill-Climbing and (1+λ) .......................... 121

4.3.3 Steepest Ascent Hill-Climbing With Replacement and (1, λ) ............... 122

4.3.4 SimulatedAnnealing...................................... 123

2

5 Representations 125

5.1 Vector and List Representations (The ec.vector Package)...................... 125

5.1.1 Vectors.............................................. 126

5.1.1.1 Initialization ..................................... 127

5.1.1.2 Crossover....................................... 128

5.1.1.3 Multi-Vector Crossover . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 131

5.1.1.4 Mutation ....................................... 131

5.1.1.5 Heterogeneous Vector Individuals . . . . . . . . . . . . . . . . . . . . . . . . . 137

5.1.2 Lists ............................................... 139

5.1.2.1 UtilityMethods ................................... 139

5.1.2.2 Initialization ..................................... 140

5.1.2.3 Crossover....................................... 140

5.1.2.4 Mutation ....................................... 141

5.1.3 Arbitrary Genes: ec.vector.Gene ................................ 142

5.2 Genetic Programming (The ec.gp Package).............................. 144

5.2.1 GPNodes, GPTrees, and GPIndividuals . . . . . . . . . . . . . . . . . . . . . . . . . . . 146

5.2.1.1 GPNodes ....................................... 147

5.2.1.2 GPTrees........................................ 147

5.2.1.3 GPIndividual..................................... 148

5.2.1.4 GPNodeConstraints................................. 148

5.2.1.5 GPTreeConstraints.................................. 148

5.2.1.6 GPFunctionSet.................................... 148

5.2.2 BasicSetup ........................................... 149

5.2.2.1 DefiningGPNodes.................................. 150

5.2.3 Defining the Representation, Problem, and Statistics . . . . . . . . . . . . . . . . . . . . 151

5.2.3.1 GPData ........................................ 152

5.2.3.2 KozaFitness...................................... 153

5.2.3.3 GPProblem...................................... 154

5.2.3.4 GPNodeSubclasses ................................. 155

5.2.3.5 Statistics........................................ 157

5.2.4 Initialization........................................... 158

5.2.5 Breeding............................................. 162

5.2.6 ACompleteExample...................................... 169

5.2.7 GPNodesinDepth....................................... 172

5.2.8 GPTrees and GPIndividuals in Depth . . . . . . . . . . . . . . . . . . . . . . . . . . . . 176

5.2.8.1 Pretty-Printing Trees . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 177

5.2.8.2 GPIndividuals .................................... 180

5.2.9 Ephemeral Random Constants . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 180

5.2.10 Automatically Defined Functions and Macros . . . . . . . . . . . . . . . . . . . . . . . 183

5.2.10.1 AboutADFStacks.................................. 186

5.2.11 Strongly Typed Genetic Programming . . . . . . . . . . . . . . . . . . . . . . . . . . . . 189

5.2.11.1 InsideGPTypes ................................... 194

5.2.12 Parsimony Pressure (The ec.parsimony Package) ...................... 195

5.3 Grammatical Evolution (The ec.gp.ge Package) ........................... 197

5.3.1 GEIndividuals, GESpecies, and Grammars . . . . . . . . . . . . . . . . . . . . . . . . . 198

5.3.1.1 StrongTyping .................................... 199

5.3.1.2 ADFsandERCs ................................... 200

5.3.2 Translation and Evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 200

5.3.3 Printing ............................................. 202

5.3.4 Initialization and Breeding . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 203

5.3.5 DealingwithGP ........................................ 204

5.3.6 ACompleteExample...................................... 204

3

5.3.6.1 GrammarFiles.................................... 206

5.3.7 HowParsingisDone...................................... 206

5.4 Push (The ec.gp.push Package)..................................... 207

5.4.1 PushandGP .......................................... 209

5.4.2 Defining the Push Instruction Set . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 210

5.4.3 CreatingaPushProblem ................................... 211

5.4.4 Building a Custom Instruction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 212

5.5 NEAT (The ec.neat Package)...................................... 213

5.5.1 Building a NEAT Application . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 214

5.5.1.1 Breeding ....................................... 214

5.5.1.2 Evaluation ...................................... 217

5.6 Rulesets and Collections (The ec.rule Package) ........................... 220

5.6.1 RuleIndividuals and RuleSpecies . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 220

5.6.2 RuleSets and RuleSetConstraints . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 221

5.6.3 Rules and RuleConstraints . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 224

5.6.4 Initialization........................................... 226

5.6.5 Mutation............................................. 226

5.6.6 Crossover ............................................ 227

6 Parallel Processes 229

6.1 Distributed Evaluation (The ec.eval Package) ............................ 229

6.1.1 TheMaster............................................ 230

6.1.2 Slaves .............................................. 231

6.1.3 OpportunisticEvolution.................................... 233

6.1.4 AsynchronousEvolution ................................... 235

6.1.5 TheMasterProblem....................................... 236

6.1.6 Noisy Distributed Problems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 238

6.2 Island Models (The ec.exchange Package) .............................. 239

6.2.1 Islands.............................................. 239

6.2.2 TheServer............................................ 241

6.2.2.1 Synchronicity..................................... 242

6.2.3 InternalIslandModels..................................... 243

6.2.4 TheExchanger ......................................... 244

7 Additional Evolutionary Algorithms 247

7.1 Coevolution (The ec.coevolve Package)................................ 247

7.1.1 CoevolutionaryFitness .................................... 247

7.1.2 GroupedProblems....................................... 248

7.1.3 One-Population Competitive Coevolution . . . . . . . . . . . . . . . . . . . . . . . . . 250

7.1.4 Multi-Population Coevolution . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 252

7.1.4.1 Parallel and Sequential Coevolution . . . . . . . . . . . . . . . . . . . . . . . 254

7.1.4.2 Maintaining Context . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 255

7.1.5 Performing Distributed Evaluation with Coevolution . . . . . . . . . . . . . . . . . . . 256

7.2 Spatially Embedded Evolutionary Algorithms (The ec.spatial Package) . . . . . . . . . . . . . 257

7.2.1 ImplementingaSpace ..................................... 258

7.2.2 SpatialBreeding ........................................ 259

7.2.3 Coevolutionary Spatial Evaluation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 260

7.3 Particle Swarm Optimization (The ec.pso Package)......................... 261

7.4 Differential Evolution (The ec.de Package).............................. 265

7.4.1 Evaluation............................................ 265

7.4.2 Breeding............................................. 265

7.4.2.1 The DE/rand/1/bin Operator . . . . . . . . . . . . . . . . . . . . . . . . . . . 267

4

7.4.2.2 The DE/best/1/bin Operator . . . . . . . . . . . . . . . . . . . . . . . . . . . 267

7.4.2.3 The DE/rand/1/either-or Operator . . . . . . . . . . . . . . . . . . . . . . . . 268

7.5 Multiobjective Optimization (The ec.multiobjective Package) ................... 269

7.5.0.1 The MultiObjectiveFitness class . . . . . . . . . . . . . . . . . . . . . . . . . . 269

7.5.0.2 The MultiObjectiveStatistics class . . . . . . . . . . . . . . . . . . . . . . . . . 271

7.5.0.3 The HypervolumeStatistics class . . . . . . . . . . . . . . . . . . . . . . . . . . 272

7.5.1 Selecting with Multiple Objectives . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 272

7.5.1.1 ParetoRanking.................................... 273

7.5.1.2 Archives ....................................... 274

7.5.2 NSGA-II/III (ec.multiobjective.nsga2 and ec.multiobjective.nsga3 Packages) . . . . . . . 274

7.5.3 SPEA2 (The ec.multiobjective.spea2 Package) ........................ 275

7.6 Estimation of Distribution Algorithms . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 276

7.6.1 PBIL ............................................... 276

7.6.2 CMA-ES............................................. 278

7.6.2.1 Parameters ...................................... 279

7.6.3 iAMaLGaM IDEA ....................................... 281

7.6.4 DOvS............................................... 283

7.7 Meta-Evolutionary Algorithms . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 285

7.7.1 TheTwoParameterFiles.................................... 286

7.7.2 DefiningtheParameters.................................... 288

7.7.3 StatisticsandMessages .................................... 290

7.7.4 Populations Versus Generations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 291

7.7.5 Using Meta-Evolution with Distributed Evaluation . . . . . . . . . . . . . . . . . . . . 292

7.7.6 Customization ......................................... 293

7.8 Resets (The ec.evolve Package)..................................... 295

5

6

Chapter 1

Introduction

The purpose of this manual is to describe practically every feature of ECJ, an evolutionary computation

toolkit. It’s not a good choice of reading material if your goal is to learn the system from scratch. It’s very

terse, boring, and long, and not organized as a tutorial but rather as an encyclopedia. Instead, I refer you to

ECJ’s four tutorials and various other documentation that comes with the system. But when you need to

know about some particular gizmo that ECJ has available, this manual is where to look.

1.1 About ECJ

ECJ is an evolutionary computation framework written in Java. The system was designed for large, heavy-

weight experimental needs and provides tools which provide many popular EC algorithms and conventions

of EC algorithms, but with a particular emphasis towards genetic programming. ECJ is free open-source

with a BSD-style academic license (AFL 3.0).

ECJ is now well over fifteen years old and is a mature, stable framework which has (fortunately) exhibited

relatively few serious bugs over the years. Its design has readily accommodated many later additions, includ-

ing multiobjective optimization algorithms, island models, master/slave evaluation facilities, coevolution,

steady-state and evolution strategies methods, parsimony pressure techniques, and various new individual

representations (for example, rule-sets). The system is widely used in the genetic programming community

and is reasonably popular in the EC community at large. I myself have used it in over thirty or forty

publications.

A toolkit such as this is not for everyone. ECJ was designed for big projects and to provide many facilities,

and this comes with a relatively steep learning curve. We provide tutorials and many example applications,

but this only partly mitigates ECJ’s imposing nature. Further, while ECJ is extremely “hackable”, the initial

development overhead for starting a new project is relatively large. As a result, while I feel ECJ is an excellent

tool for many projects, other tools might be more apropos for quick-and-dirty experimental work.

Why ECJ was Made

ECJ’s primary inspiration comes from lil-gp [

18

], to which it owes much. Homage to

lil-gp may be found in ECJ’s command-line facility, how it prints out messages, and how it stores statistics.

Work on ECJ commenced in Fall 1998 after experiences with lil-gp in evolving simulated soccer robot teams

[6]. This project involved heavily modifying lil-gp to perform parallel evaluations, a simple coevolutionary

procedure, multiple threading, and strong typing. Such modifications made it clear that lil-gp could not be

further extended without considerable effort, and that it would be worthwhile developing an “industrial-

grade” evolutionary computation framework in which GP was one of a number of orthogonal features. I

intended ECJ to provide at least ten years of useful life, and I believe it has performed well so far.

7

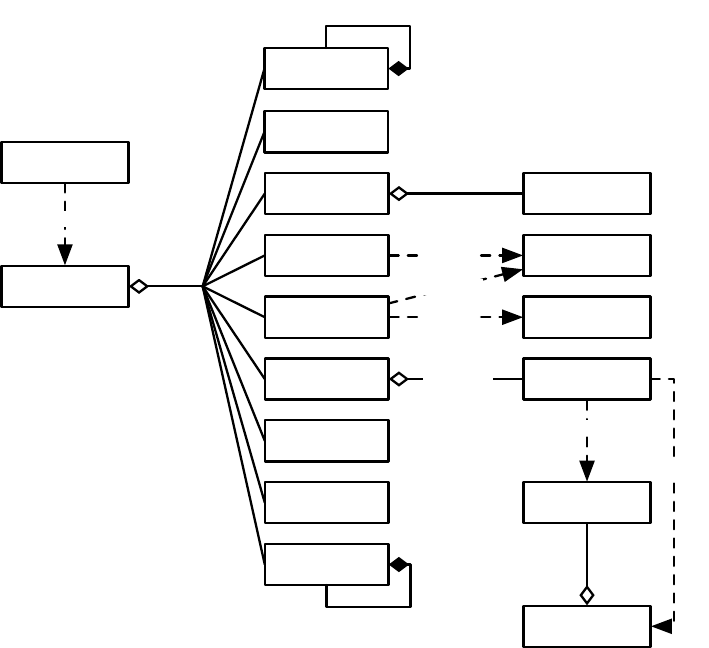

Evaluator

Pre-Evaluation Statistics

Post-Evaluation Statistics

Pre-Breeding

Exchange

Pre-Pre-Breeding Exchange Statistics

Post-Pre-Breeding Exchange Statistics

Breeding

Pre-Breeding Statistics

Post-Breeding Statistics

Post-Breeding

Exchange

Pre-Post-Breeding Exchange Statistics

Post-Post-Breeding Exchange Statistics

Initializer

Pre-Initialization Statistics

Post-Initialization Statistics

Initialize Exchanger, Evaluator

Finisher

Pre-Finishing Statistics

Shut Down Exchanger, Evaluator

Out of time or

want to quit?

Recover

from

Checkpoint

Reinitialize Exchanger, Evaluator

Optionally

Checkpoint

Optional Post-Checkpoint Statistics

Optional Pre-Checkpoint Statistics

Increment Generation

Want to quit?

NO

NO

YES

YES

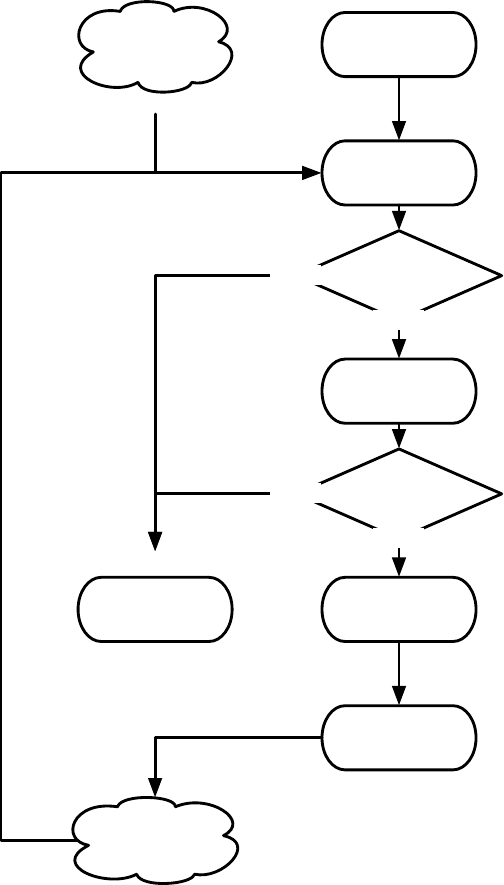

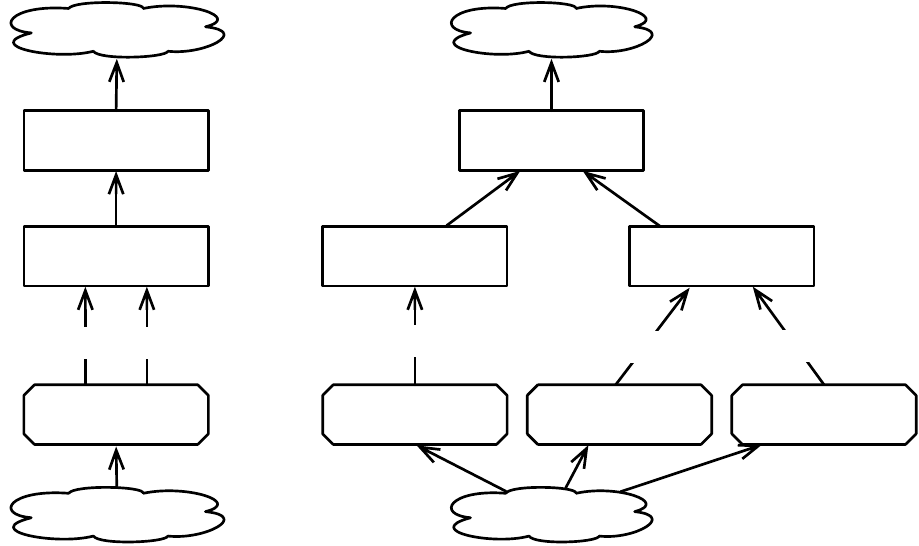

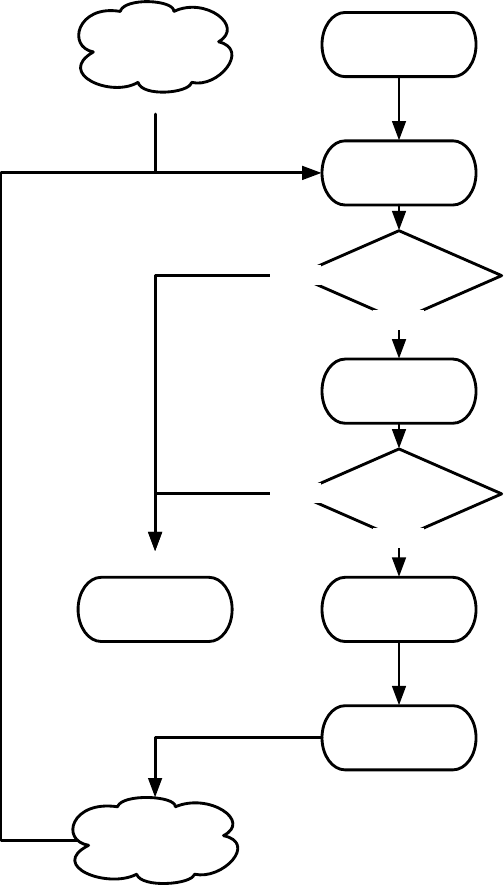

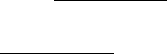

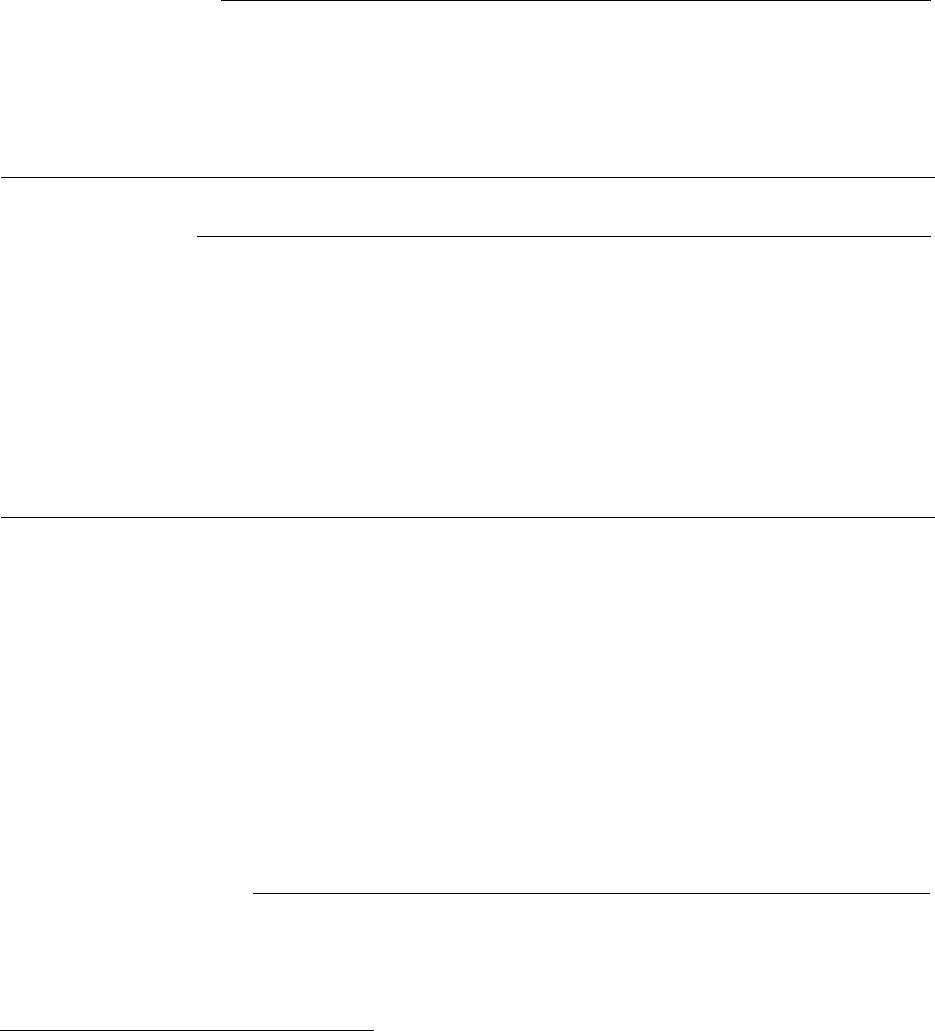

Figure 1.1 Top-Level Loop of ECJ’s SimpleEvolutionState class, used for basic generational EC algorithms. Various sub-operations are

shown occurring before or after the primary operations. The full population is revised each iteration.

8

1.2 Overview

ECJ is a general-purpose evolutionary computation framework which attempts to permit as many valid

combinations as possible of individual representation and breeding method, fitness and selection procedure,

evolutionary algorithm, and parallelism.

Top-level Loop

ECJ hangs the entire state of the evolutionary run off of a single instance of a subclass of

EvolutionState. This enables ECJ to serialize out the entire state of the system to a checkpoint file and to

recover it from the same. The EvolutionState subclass chosen defines the kind of top-level evolutionary loop

used in the ECJ process. We provide two such loops: a simple generational loop with optional elitism, and a

steady-state loop.

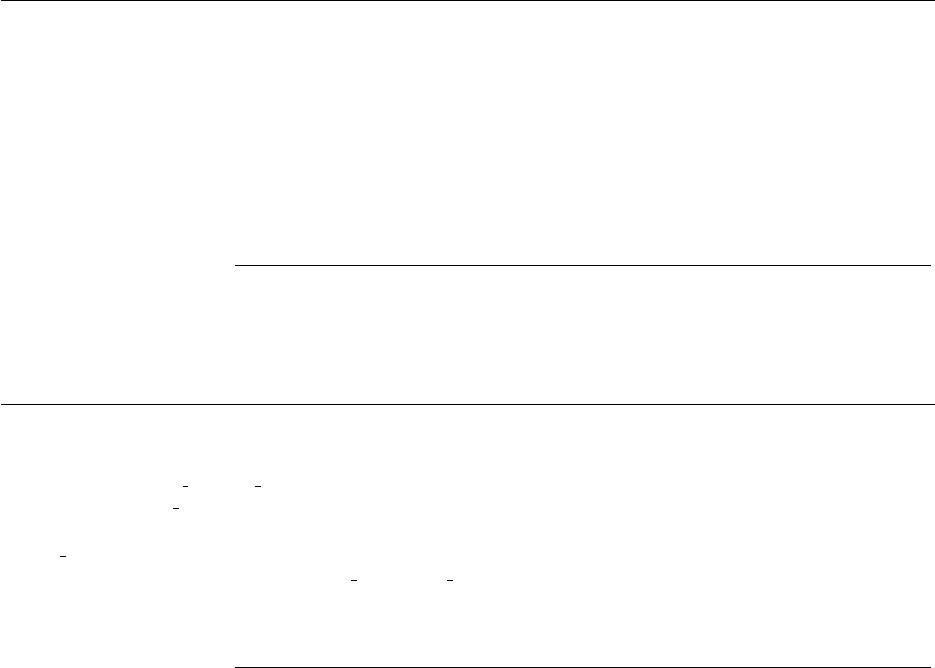

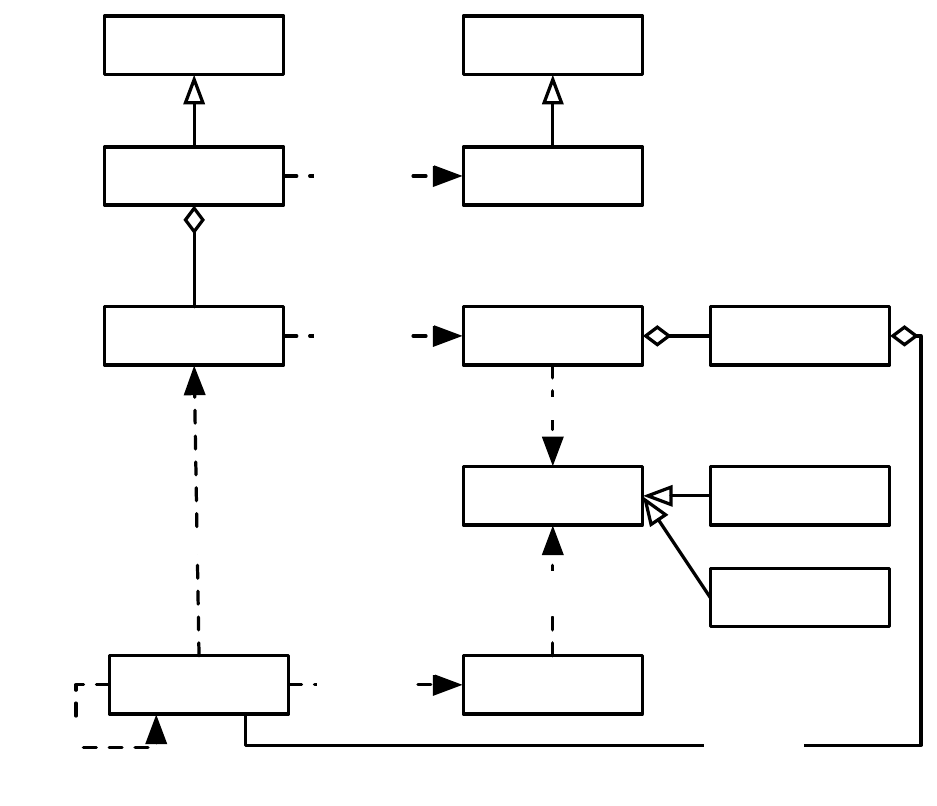

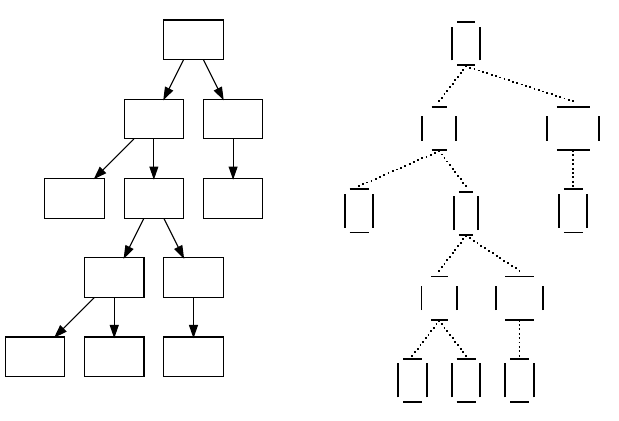

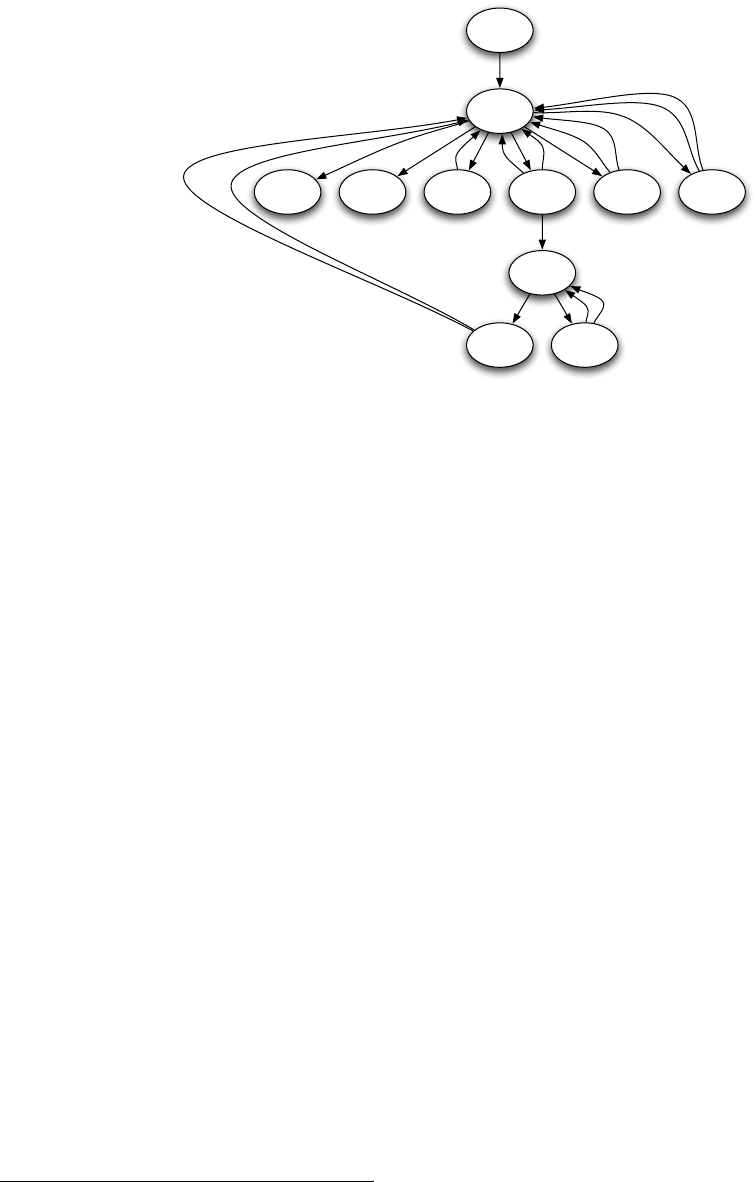

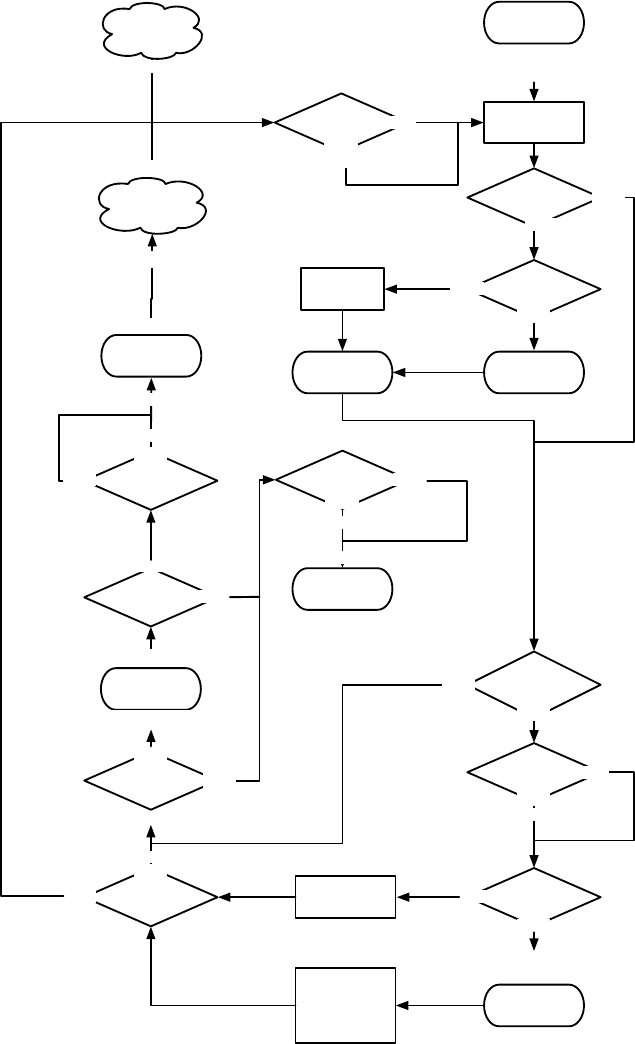

Figure 1.1 shows the top-level loop of the simple generational EvolutionState. The loop iterates between

breeding and evaluation, with an optional “exchange” period after each. Statistics hooks are called before

and after each period of breeding, evaluation, and exchanging, as well as before and after initialization of

the population and “finishing” (cleaning up prior to quitting the program).

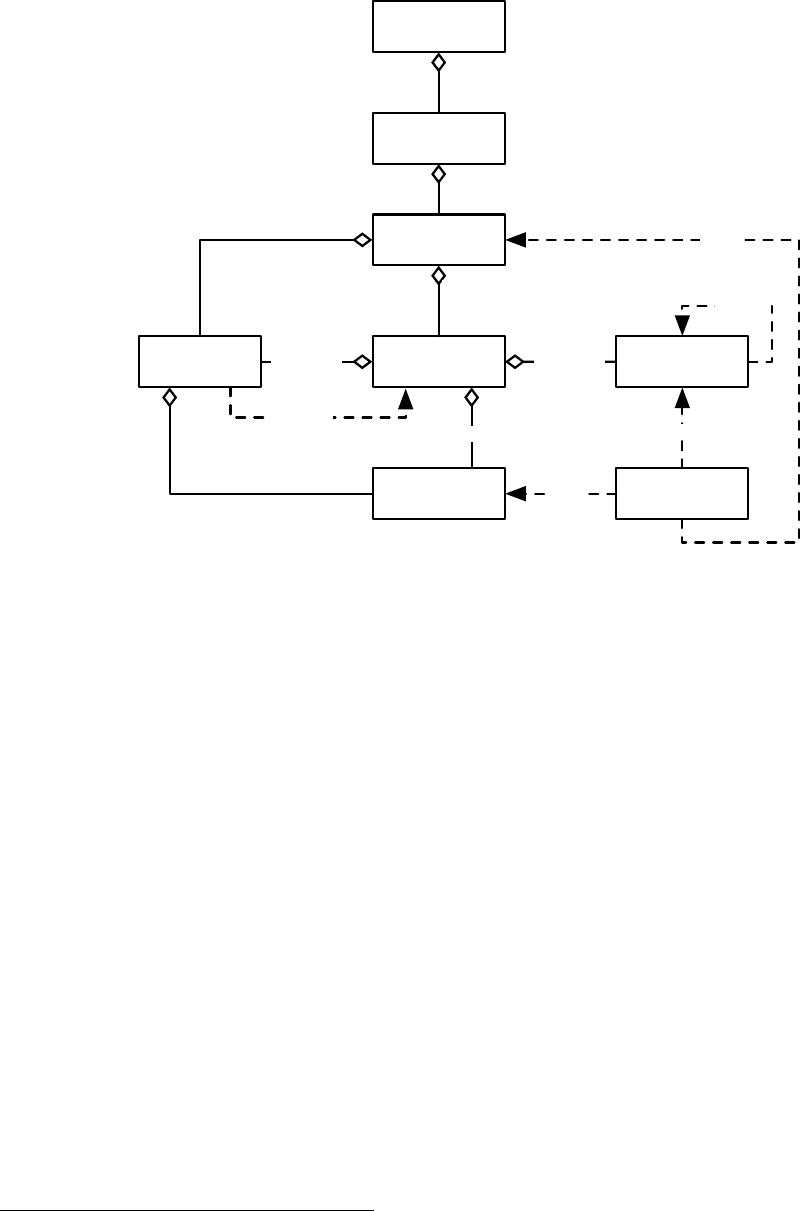

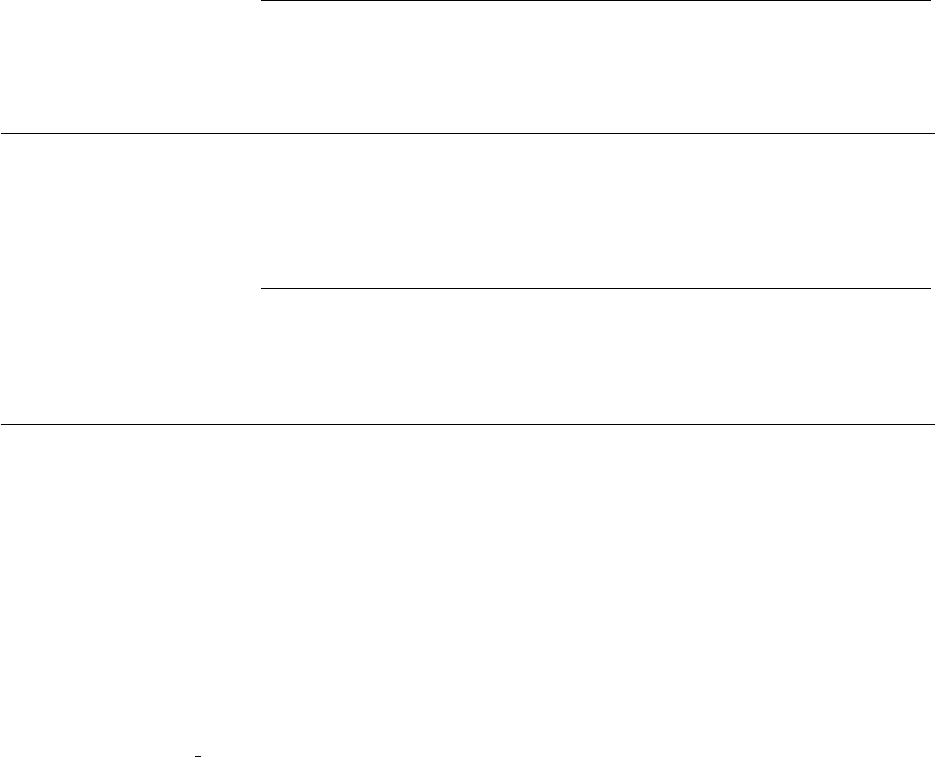

Breeding and evaluation are handled by singleton objects known as the Breeder and Evaluator respectively.

Likewise, population initialization is handled by an Initializer singleton, and finishing is done by a Finisher.

Exchanges after breeding and after evaluation are handled by an Exchanger. The particular versions of these

singleton objects are determined by the experimenter, though we provide versions which perform common

tasks. For example, we provide a traditional-EA SimpleEvaluator, a steady-state EA SteadyStateEvaluator, a

“single-population coevolution” CompetitiveEvaluator, and a multi-population coevolution MultiPopCoevolu-

tionaryEvaluator, among others. There are likewise custom breeders and initializers for different functions.

The Exchanger provides an opportunity for other hooks, notably internal and external island models. For ex-

ample, post-breeding exchange might allow external immigrants to enter the population, while emmigrants

might leave the population during post-evaluation exchange. These singleton operators comprise most of

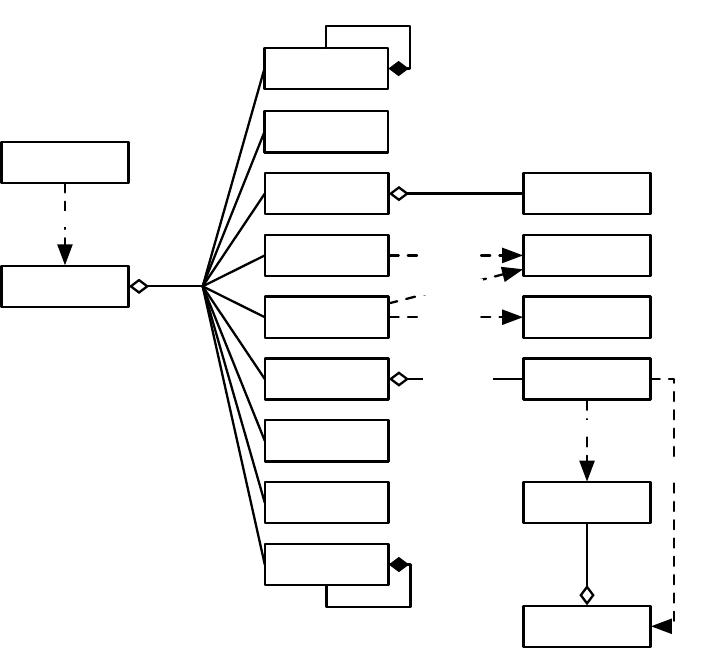

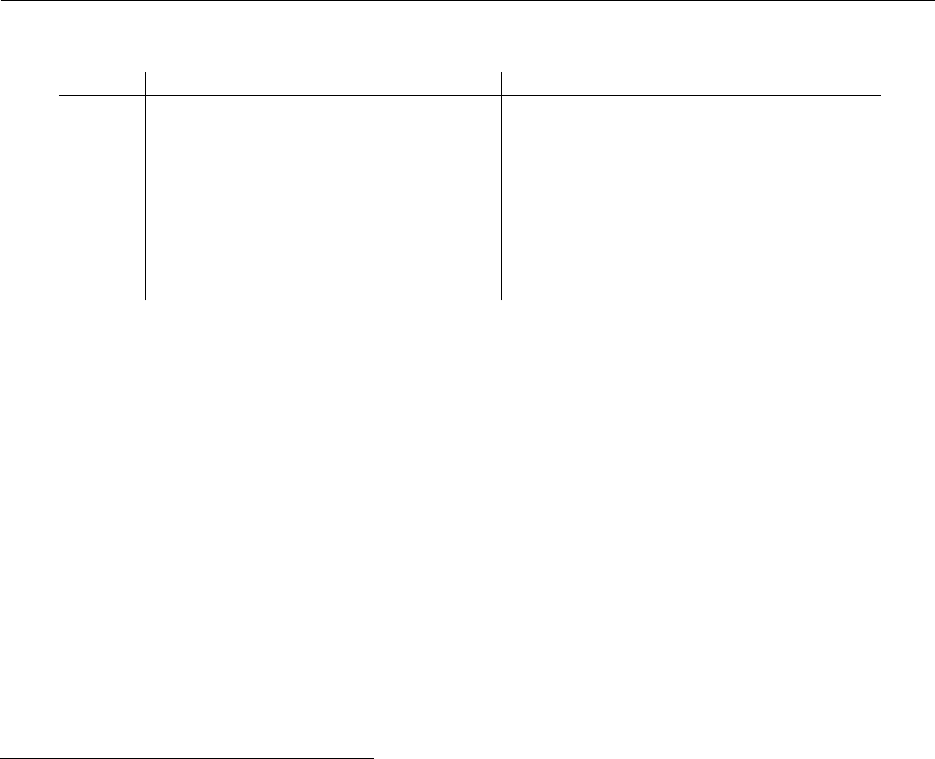

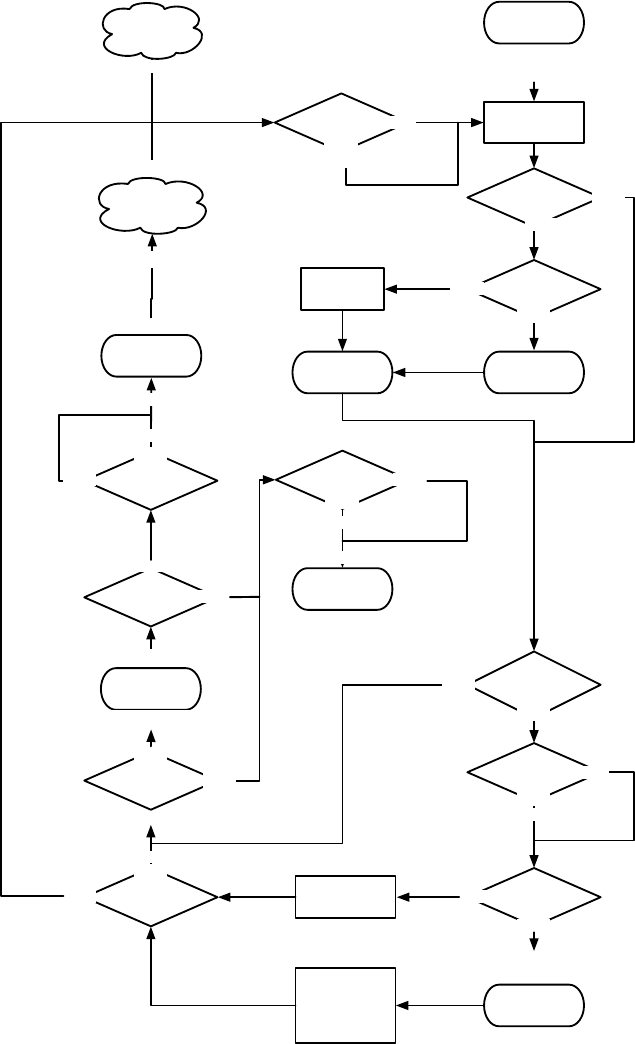

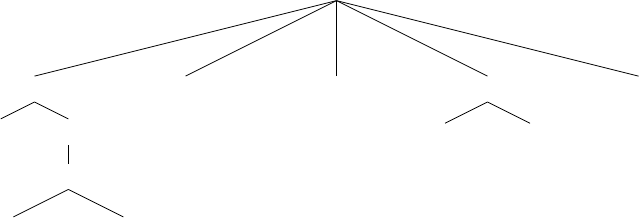

the high-level “verbs” in the ECJ system, as shown in Figure 1.2.

Parameterized Construction

ECJ is unusually heavily parameterized: practically every feature of the

system is determined at runtime from a parameter. Parameters define the classes of objects, the specific

subobjects they hold, and all of their initial runtime values. ECJ does this through a bootstrap class called

Evolve, which loads a ParameterDatabase from runtime parameter files at startup. Using this database, Evolve

constructs the top-level EvolutionState and tells it to “setup” itself. EvolutionState in turn calls subsidiary

classes (such as Evaluator) and tells them to “setup” themselves from the database. This procedure continues

down the chain until the entire system is constructed.

State Objects

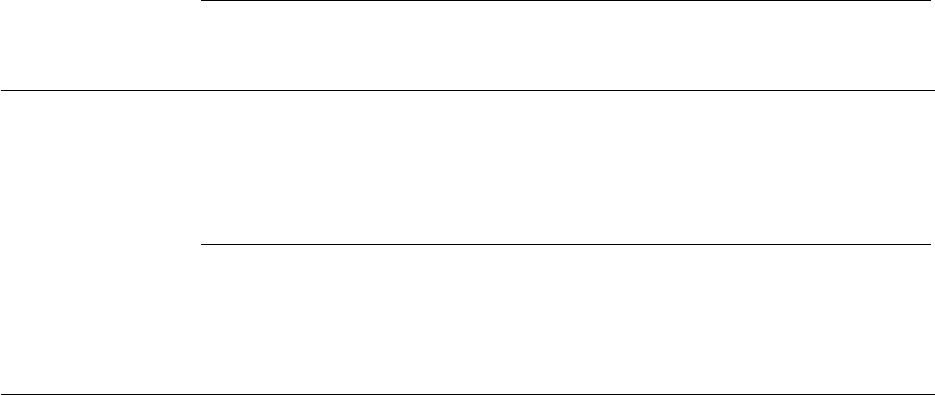

In addition to “verbs”, EvolutionState also holds “nouns” — the state objects representing

the things being evolved. Specifically, EvolutionState holds exactly one Population, which contains some

N

(typically 1) Subpopulations. Multiple Subpopulations permit experiments in coevolution, internal island

models, etc. Each Subpopulation holds some number of Individuals and the Species to which the Individuals

belong. Species is a flyweight object for Individual: it provides a central repository for things common to many

Individuals so they don’t have to each contain them in their own instances.

While running, numerous state objects must be created, destroyed, and recreated. As ECJ only learns the

specific classes of these objects from the user-defined parameter file at runtime, it cannot simply construct

them using Java’s new operator. Instead such objects are created by constructing a prototype object at startup

time, and then using this object to stamp out copies of itself as often as necessary. For example, Species

contains a prototypical Individual. When new Individuals must be created for a given Subpopulation, they are

copied from the Subpopulation’s Species and then customized. This allows different Subpopulations to use

different Individual representations.

In keeping with its philosophy of orthogonality, ECJ defines Fitnesses separate from Individuals (represen-

tations), and provides both single-objective and multi-objective Fitness subclasses. In addition to holding a

prototypical Individual, Species also hold the prototypical Fitness to be used with that kid of Individual.

9

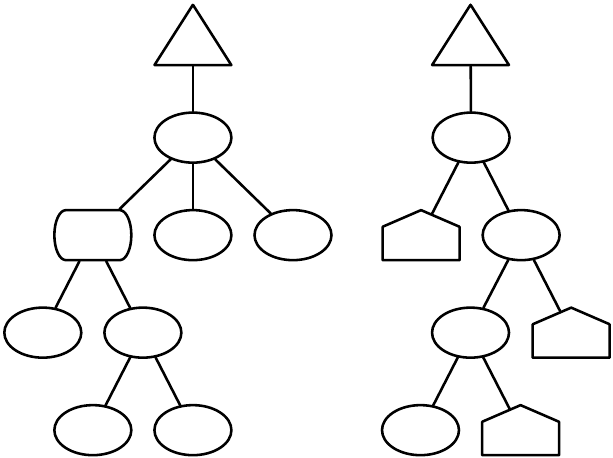

EvolutionState

Initializer

Breeder

Evaluator

Finisher

Statistics

Exchanger

Problem

Mersenne Twister

RNG

Output

Parameter

Database

11

11

makes Population

Breeding Pipelineapplies

Evolve

makes

updates

Fitness

updates

Individual

evaluates

1

n

1

1

0..n

1

prototype

Log

n1

0..n

1

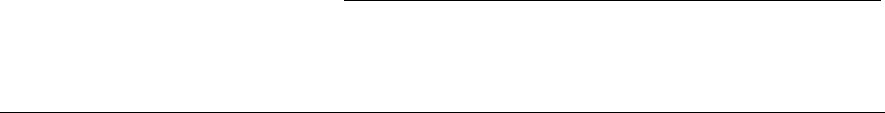

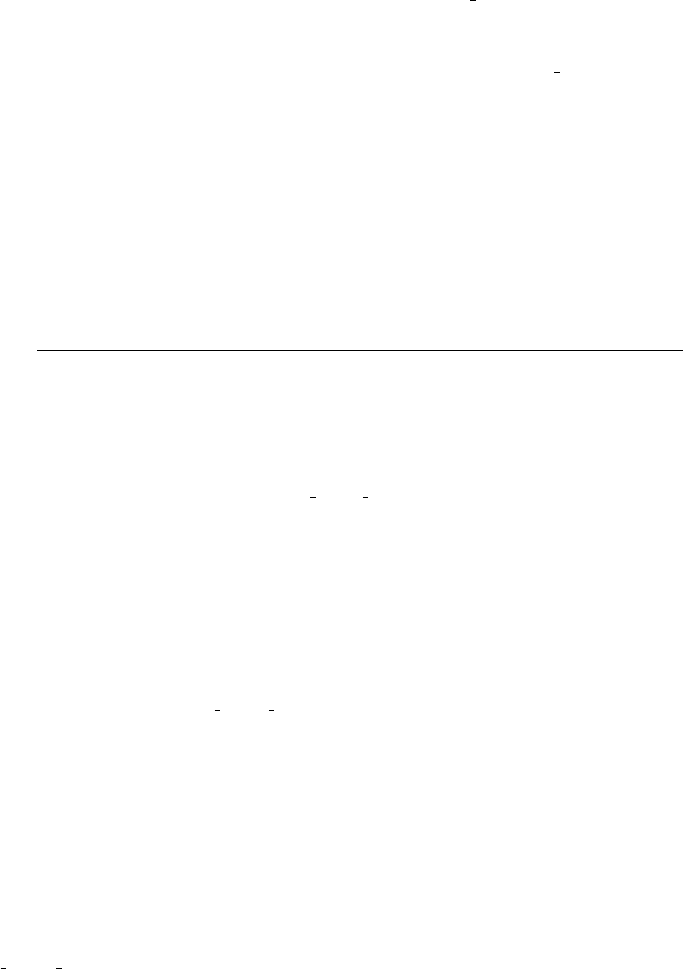

Figure 1.2 Top-Level operators and utility facilities in EvolutionState, and their relationship to certain state objects.

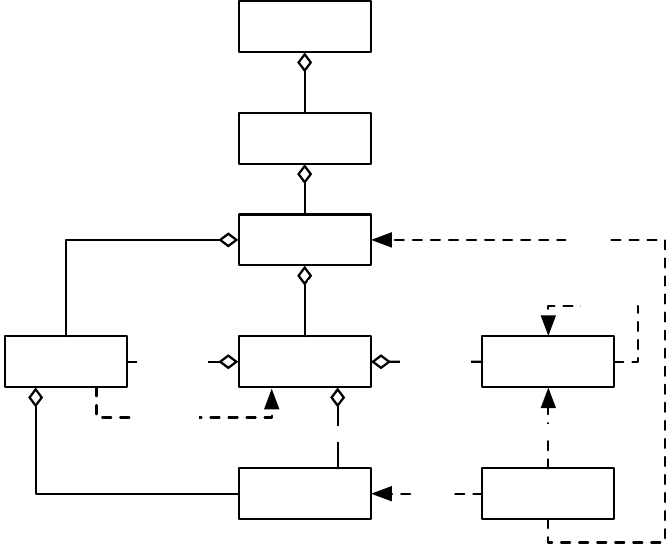

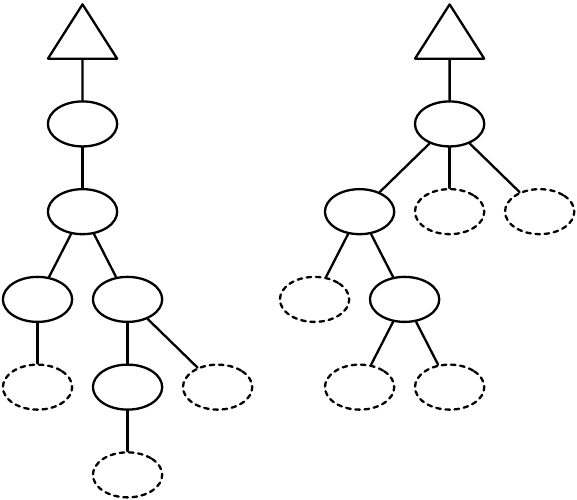

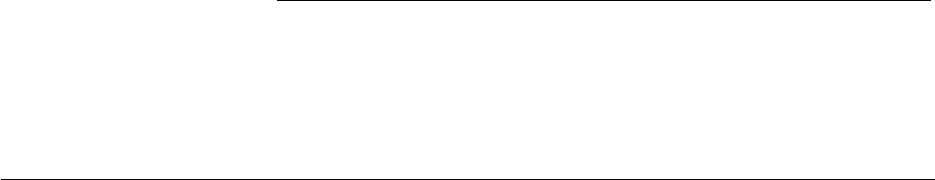

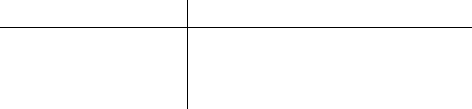

Breeding

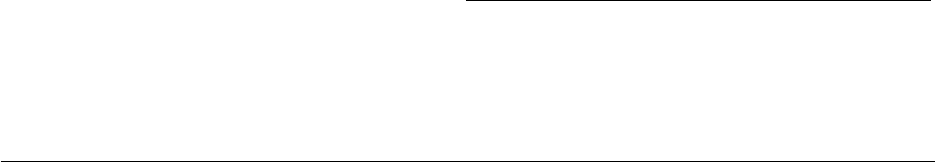

ASpecies holds a prototypical breeding pipeline which is cloned by the Breeder and used per-thread

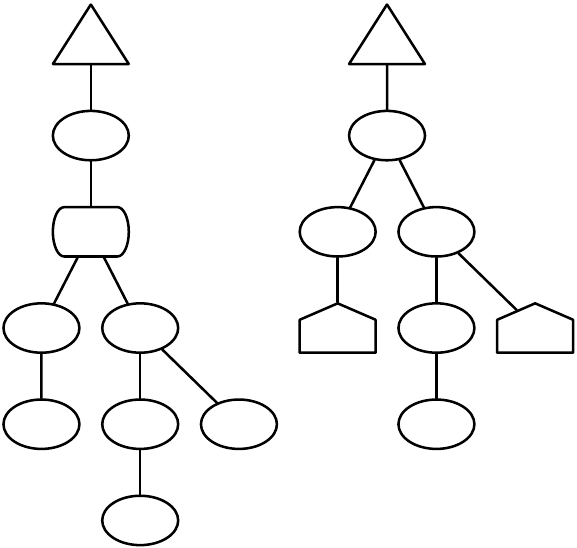

to breed individuals and form the next-generation population. Breeding pipelines are tree structures where

a node in the tree filters incoming Individuals from its child nodes and hands them to its parents. The leaf

nodes in the tree are SelectionMethods which simply choose Individuals from the old subpopulation and

hand them off. There exist SelectionMethods which perform tournament selection, fitness proportional

selection, truncation selection, etc. Nonleaf nodes in the tree are BreedingPipelines, many of which copy and

modify their received Individuals before handing them to their parent nodes. Some BreedingPipelines are

representation-independent: for example, MultiBreedingPipeline asks for Individuals from one of its children at

random according to some probability distribution. But most BreedingPipelines act to mutate or cross over

Individuals in a representation-dependent way. For example, the GP CrossoverPipeline asks for one Individual

of each of its two children, which must be genetic programming Individuals, performs subtree crossover on

those Individuals, then hands them to its parent.

A tree-structured breeding pipeline allows for a rich assortment of experimenter-defined selection and

breeding proceses. Further, ECJ’s pipeline is copy-forward:BreedingPipelines must ensure that they copy

Individuals before modifying them or handing them forward, if they have not been already copied. This

guarantees that new Individuals are copies of old ones in the population, and furthermore that multiple

pipelines may operate on the same Subpopulation in different threads without the need for locking. ECJ may

apply multiple threads to parallelize the breeding process without the use of Java synchronization at all.

10

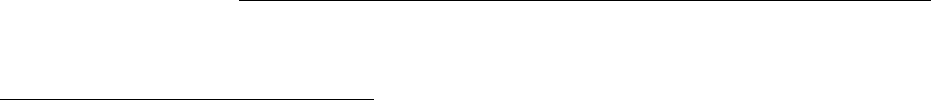

EvolutionState

Population

Subpopulation

Individual

1

1

1..n

1

1..n

1

Species

Fitness

1

1

prototype

1

1

prototype

1 1

Breeding Pipeline

prototype

1

1

flyweight

1..n 1

Selection Method

child of

0..n

1

child of

0..n

1

uses

uses

1

1

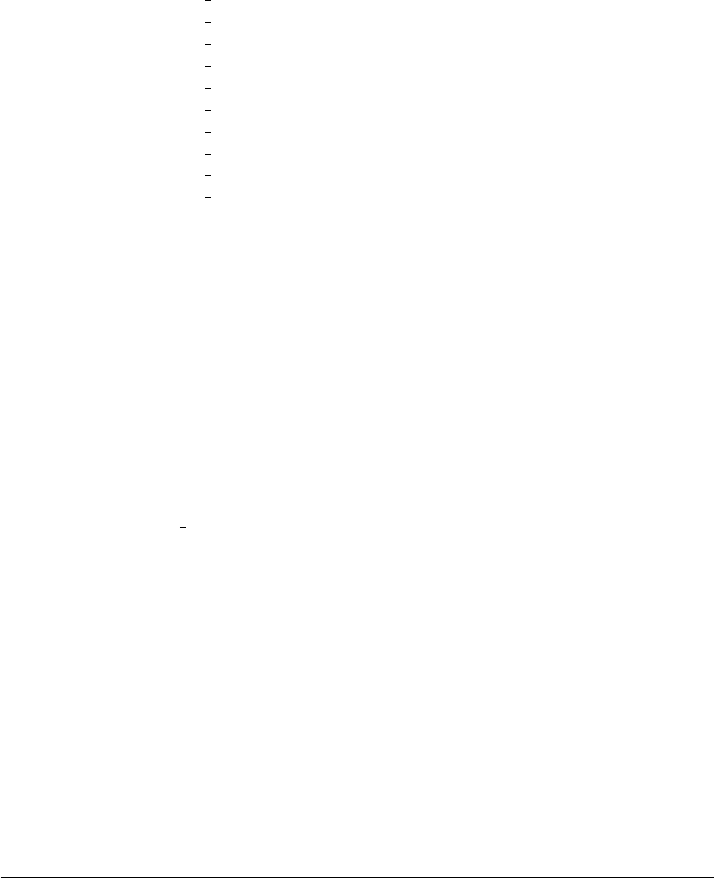

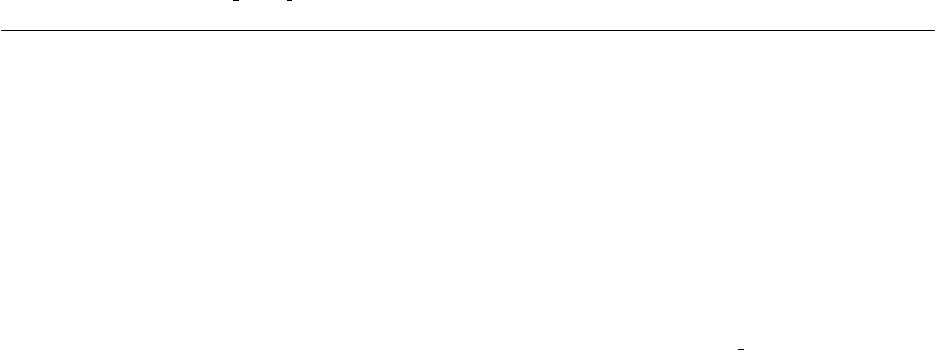

Figure 1.3 Top-Level data objects used in evolution.

Evaluation

The Evaluator performs evaluation of a population by passing one or (for coevolutionary

evaluation) several Individuals to a Problem subclass which the Evaluator has cloned off of its prototype.

Evaluation may too be done in multithreaded fashion with no locking, using one Problem per thread.

Individuals may also undergo repeated evaluation in coevolutionary Evaluators of different sorts.

In most projects using ECJ, the primary task is to construct an appropriate Problem subclass. The task

of the Problem is to assess the fitness of the Individual(s) and set its Fitness accordingly. Problem classes also

report if the ideal Individual has been discovered.

Utilities

In addition to its ParameterDatabase, ECJ also uses a checkpointable Output convenience facility

which maintains various streams, repairing them after checkpoint. Output also provides for message logging,

retaining in memory all messages during the run, so that on checkpoint recovery the messages are printed

out again as before. Other utilities include population distribution selectors, searching and sorting tools, etc.

The quality of a random number generator is important for a stochastic optimization system. As such,

ECJ’s random number generator was the very first class written in the system: it is a Java implementation

of the highly respected Mersenne Twister algorithm [

12

] and is the fastest such implementation available.

Since ECJ’s release, the ECJ MersenneTwister and MersenneTwisterFast classes have found their way in a

number of unrelated public-domain systems, including the popular NetLogo multiagent simulator [

26

].

MersenneTwisterFast is also shared in ECJ’s sister software, the MASON multiagent simulation toolkit [8].

Representations and Genetic Programming

ECJ allows you to specify any genome representation you

like. Standard representation packages in ECJ provide functionality for vectors of all Java data types;

arbitrary-length lists; trees; and collections of objects (such as rulesets).

ECJ is perhaps best known for its support of “Koza”-style tree-structured genetic programming repre-

sentations. ECJ represents these individuals as forests of parse-trees, each tree equivalent to a single Lisp

11

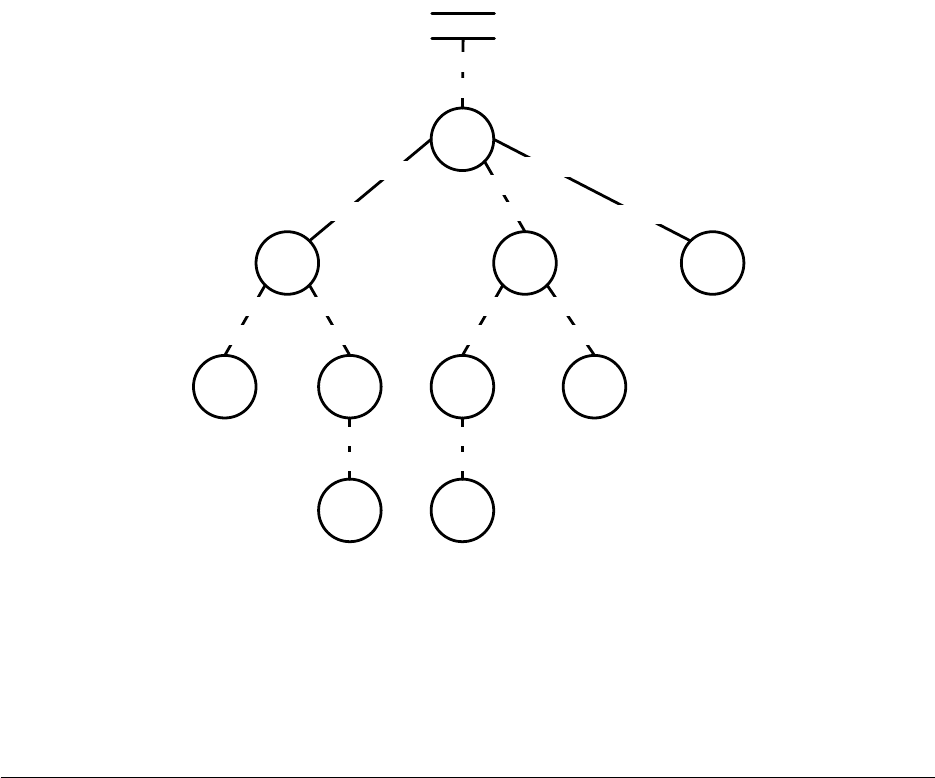

ir

2.3

*

if

and

on-

wall tick>

20 3

bool

bool

bool

bool

bool

bool

int

int

int

int

int, float

float

6

int, float

int

int, float

float

int, float

float

tree

int, float

float

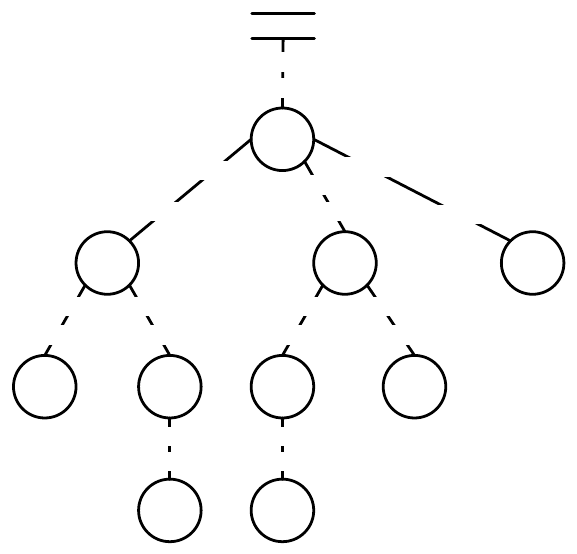

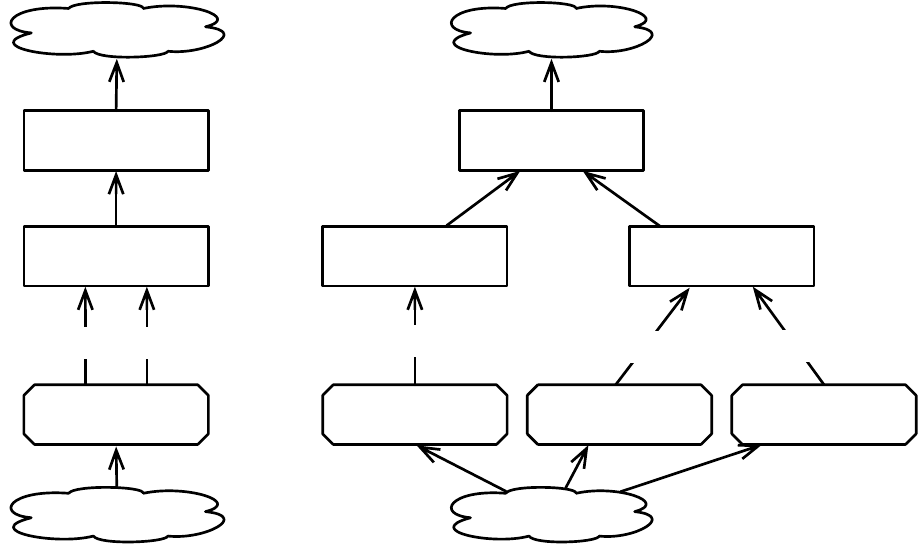

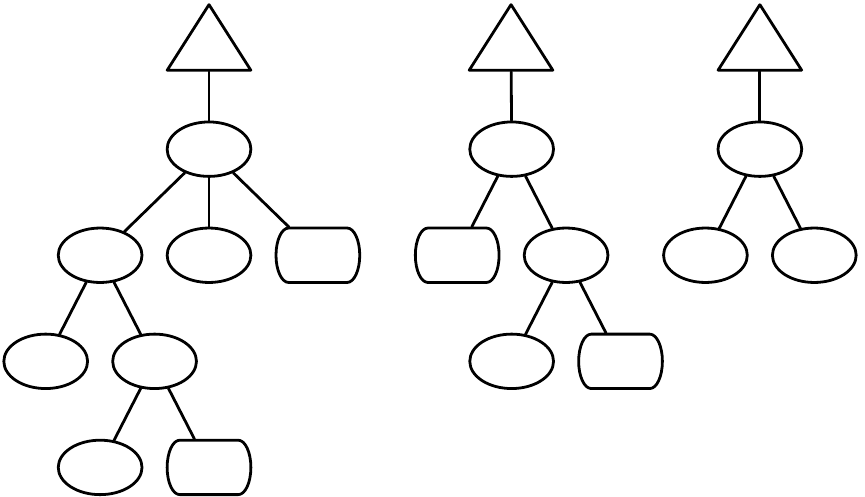

Figure 1.4 A typed genetic programming parse tree.

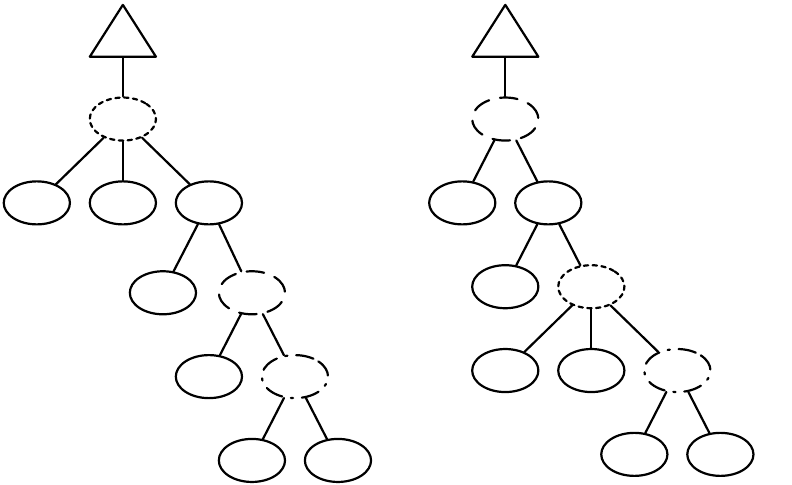

s-expression. Figure 1.4 shows a parse-tree for a simple robot program, equivalent to the Lisp s-expression

(if (and on-wall (tick>20) (∗(ir 3) 6) 2.3).

In C this might look like

(onWall && tick >20) ? ir(3) * 6 : 2.3.

This notionally says “If I’m on the wall and my tick-count is greater than 20, then return the value of my

third infrared sensor times six, else return 2.3”. Such parse-trees are typically evaluated by executing their

programs in a test environment, and modified via subtree crossover (swapping subtrees among individuals)

or various kinds of mutation (replacing a subtree with a randomly-generated one, perhaps).

ECJ allows multiple subtrees for various experimental needs: Automatically Defined Functions (ADFs —

a mechanism for evolving subroutine calls [

4

]), or parallel program execution, or evolving teams of programs.

Along with ADFs, ECJ provides built-in support for Automatically Defined Macros (ADMs) [

20

] and

Ephemeral Random Constants (ERCs [3], such as the numbers 20, 3, 6, and 2.3 in Figure 1.4).

Genetic programming trees are constructed out of a “primorial soup” of function templates (such as

on-wall or 2.3. Early forms of genetic programming were typeless: though such templates had a predefined

arity (number of arguments), any node could be connected to any other. Many genetic programming needs

require more constraints than this. For example, the node if might expect a boolean value in its first argument,

and integers or floats in the second and third arguments, and return a float when evaluated. Similarly and

might take two booleans as arguments and return a boolean, while

∗

would take ints or floats as arguments

and return a float.

Such types are often associated with the kinds of data passed from node to node, but they do not have

to be. Typing might be used to constrain certain nodes to be evaluated in groups or in a certain order: for

example, a function type-block might insist that its first argument be of type foo and its second argument be

of type ar to make certain that a foo node be executed before a ar node.

ECJ permits a simple static typing mechanism called set-based typing, which is suitable for many such

tasks. In set-based typing, the return type and argument types of each node are each defined to be sets of

type symbols (for example,

{ool}

or

{

foo, bar, baz

}

, or

{

int, float

}

. The desired return type for the tree’s root is

12

similarly defined. A child node is permitted to fit into the argument slot of a parent node if the child node’s

return type and type of the that argument slot in the parent are compatible. We define types to be compatible

if their set intersection is nonempty (that is, they share at least one type symbol).

Set-based typing is sufficient for the typing requirements found in many programming languages,

including ones with type hierarchies. It allows, among other things, for nodes such as

∗

to accept either

integers or floats. However there are considerable restrictions on the power of set-based typing. It’s often

useful for the return type of a node to change based on the particular nodes which have plugged into it as

arguments. For example,

∗

might be defined as returning a float if at least one of its arguments returns floats,

but returning an integer if both of its arguments return integers. if might be similarly defined not to return a

particular type, but to simply require that its return type and the second and third argument types must all

match. Such “polymorphic” typing is particularly useful in situations such as matrix multiplication, where

the operator must place constraints on the width and height of its arguments and the final returned matrix.

In this example, it’s also useful to have an infinite number of types (perhaps to represent matrices of varying

widths or heights).

ECJ does not support polymorphic typing out of the box simply because it is difficult to implement

many if not most common tree modification and generation algorithms using polymorphic typing: instead,

set-based typing is offered to handle as many common needs as can be easily done.

Out of the Box Capabilities ECJ provides support out-of-the-box for a bunch of algorithm options:

•

Generational algorithms:

(µ

,

λ)

and

(µ+λ)

Evolution Strategies, the Genetic Algorithm, Genetic

Programming variants, Grammatical Evolution, PushGP, and Differential Evolution

• Steady-State evolution

• Parsimony pressure algorithms

• Spatially-embeded evolutionary algorithms

• Random restarts

• Multiobjective optimization, including the NSGA-II and SPEA2 algorithms.

• Cooperative, 1-Population Competitive, and 2-Population Competitive coevolution.

• Multithreaded evaluation and breeding.

• Parallel synchronous and asynchronous Island Models spread over a grid of computers.

• Internal synchronous Island Models internally in a single ECJ process.

• Massive parallel generational fitness evaluation of individuals on remote slave machines.

•

Asynchronous Evolution, a version of steady-state evolution with massive parallel fitness evaluation

on remote slave machines.

•

Opportunistic Evolution, where remote slave machines run their own mini-evolutionary processes for

a while before sending individuals back to the master process.

• Internal synchronous Island Models internally in a single ECJ process.

• Meta-Evolution

• A large number of selection and breeding operators

ECJ also has a GUI, though in truth I nearly universally use the command-line.

Idiosyncracies

ECJ was developed near the introduction of Java and so has a lot of historical idiosyncra-

cies.

1

Some of them exist to this day because of conservatism: refactoring is disruptive. If you code with ECJ,

1It used to have a lot more — I’ve been weeding out ones that I think are unnecessary nowadays!

13

you’ll definitely have to get used to one or more of the following:

•

No generics at all, few iterators or enumerators, no Java features beyond 1.4 (including annotations),

and little use of the Java Collections library. This is part historical, and part my own dislike of Java’s

byzantine generics implementation, but it’s mostly efficiency. Generics are very slow when used with

basic data types, as they require boxing and unboxing. The Java Collections library is unusually badly

written in many places internally: and anyway, for speed we tend to work directly with arrays.

•

Hand-rolled socket code. With one exception (optional compression), ECJ’s parallel facility doesn’t

rely on other libraries.

•

ECJ loads nearly every object from its parameter database. This means that you’ll rarely see the new

keyword in ECJ, nor any constructors. Instead ECJ’s usual “constructor” method is a method called

setup(...), which sets up an object from the database.

•

A proprietary logging facility. ECJ was developed before the existence of java.util.logging. Partly out of

conservatism, I am hesitant to rip up all the pervasive logging just to use Sun’s implementation (which

isn’t very good anyway).

•

A parameter database derived from Java’s old java.util.Properties list rather than XML. This is historical

of course. But seriously, do I need a justification to avoid XML?

•

Mersenne Twister random number generator. java.lang.Random is grotesquely bad, and systems which

use it should be shunned.

• A Makefile. ECJ was developed before Ant and I’ve personally never needed it.

1.3 Unpacking ECJ and Using the Tutorials

ECJ is designed to be built either with

maven

or with

make

. If you build ECJ with maven, then it will

package all of ECJ into a single

jar file

. If you build with

make

, you have the option of packaging into a

single

jar file

or into a

directory of class files and resources

. Building to a directory is how ECJ classically

was constructed, but nowadays a jar file is more useful. However even if you build to a jar file, it’s still useful

to have the resources and java files on-hand so you know what various ECJ applications can do and how to

run them.

After unpacking ECJ, you’re left with one directory called ecj where you will find several items:

• A top-level README.md file, which should be self-explanatory in its importance.

• ECJ’s LICENSE file, which describes the primary license (AFL 3.0, a BSD-style academic license).

• A CHANGES log, which lists all past changes to all versions (including the latest).

• A Makefile for building via make.

• A pom.xml for building via Maven.

• The docs directory. This contains most of the ECJ documentation.

•

The start directory. This contains various scripts for starting up ECJ: though in truth we rarely use

them.

•

The lib directory. This contains any additional libraries that maven (not make) will need to build ECJ

(to build with make, you’ll use a different mechanism).

•

The classes directory. This contains the class files, and possibly other resources (see next bullet), for ECJ

after it has been built.

14

•

The src directory, which contains the main Java code and the internal library test code. Of particular

interest to you will be the directories

src/main/java/ec/

, which is the top-level package for ECJ, and

src/main/java/resources/ec/. The src/main/java/ec/ directory holds the top-level package for ECJ,

ec. The src/main/java/resources/ec directory is an identical-structured directory containing various

resources (notably parameter files). If you build with

make

, then these resources will get merged into

the classes directory.

1.3.1 The ec Directory, the CLASSPATH, and jar files

The ec (ecj/src/main/java/ec/) directory is ECJ’s top-level package. Every subdirectory is a subpackage,

and most of them are headed by helpful README files which describe the contents of the directory. Most

packages contain not only Java files and class files but also parameter files and occasional data files: ECJ

was designed originally for the class files to be compiled and stored right alongside the Java files in these

directories, though it can be used with the separate-build-area approach taken by IDEs like Eclipse.

Because ec is the top-level package, you can compile ECJ, more or less, by just sticking its parent directory

(the ecj directory), in your CLASSPATH. You will also need to add certain

jar files

in order to compile ECJ’s

distributed evaluation and island model facilities, and its GUI. You can get these jar files from the ECJ

main website (http://cs.gmu.edu/

∼

eclab/projects/ecj/). Note that none of these libraries is required. For

example, if the libraries for the distributed evaluator and island model are missing, ECJ will compile but will

complain if you try to run those packages with compression turned on (a feature of the packages). The GUI

library is optional to ECJ, so if you don’t install its libraries, you can still compile ECJ by just deleting the

ec/display directory.

1.3.1.1 The ec/display Directory: ECJ’s GUI

This directory contains ECJ’s GUI. It’s in a state of disrepair and I suggest you do not use it. ECJ is really best

as a command line program. In fact, as mentioned above, you can simply delete the directory and ECJ will

compile just fine.

1.3.1.2 The ec/app Directory: Demo Applications

This directory contains all the demo applications. We have quite a number of demo applications, many

sharing the same subdirectories. Read the provided README file for some guidance.

1.3.2 The docs Directory

This directory contains all top-level documentation of ECJ except for the various README files scattered

throughout the package. The index.html file provides the top-level entry point to the documentation.

The documentation includes:

• Introduction to parameters in ECJ

• Class documentation

•

ECJ’s four tutorials and post-tutorial discussion. The actual tutorial code is located in the ec/app

directory.

• An (old) overview of ECJ

• An (old) discussion of ECJ’s warts

• Some (old) graph diagrams of ECJ’s structure

• This manual

15

1.3.2.1 Tutorials

ECJ has four tutorials which introduce you to the basics of coding on the system.

I strongly suggest you go

through them before continuing through the rest of this manual. They are roughly:

1. A simple GA to solve the MaxOnes problem with a boolean representation.

2. A GA to solve an integer problem, with a custom mutation pipeline.

3.

An evolution strategy to solve a floating-point problem, with a custom statistics object and reading

and writing populations.

4. A genetic programming problem, plus some elitism.

As should be obvious from the rest of this manual, this

barely scratches the surface

of ECJ. No mention

is given of parallelism, differential evolution, coevolution, multiobjective optimization, list and ruleset

representations, grammatical encoding, spatial embedding, etc. But it’ll get you up to speed.

16

Chapter 2

ec.Evolve and Utility Classes

ECJ is big. Let us begin.

ECJ’s entry point is the class ec.Evolve. This class is little more than bootstrapping code to set up the ECJ

system, construct basic datatypes, and get things going.

To run an ECJ process, you fire up ec.Evolve with certain runtime arguments.

java ec.Evolve -file myParameterFile.params -p param=value -p param=value (etc.)

ECJ sets itself up entirely using a

parameter file

. To this you can add additional

command-line parame-

ters

which override those found in the parameter file. More on the parameter file will be discussed starting

in Section 2.1.

For example, if you were presently in the ecj directory, you could do this:

java ec.Evolve -file ec/app/ecsuite/ecsuite.params

This all assumes that the parameter file is a free-standing file in your filesystem. But it might not be:

you might want to start up from a parameter file stored within a Jar file (for example if your ECJ library is

bundled up into a Jar file like ecj.jar). To do this you can specify the parameter file as a file resource relative

to the .class file of a class (a-la Java’s Class.getResource(...) method):

java ec.Evolve -from myParameterFile.params -at relative.to.Classname -p param=value

(etc.)

... for example:

java ec.Evolve -from ecsuite.params -at ec.app.ecsuite.ECSuite

You can also say:

java ec.Evolve -from myParameterFile.params -p param=value (etc.)

In which case ECJ will assume that the class is ec.Evolve. In this situation, you’d probably need to specify

the parameter file as a path away from ec.Evolve (which is in the ec directory), for example:

java ec.Evolve -from app/ecsuite/ecsuite.params

(Note the missing ec/...). See Section 2.1 for more discussion about all this.

17

ECJ can also restart from a checkpoint file it created in a previous run:

java ec.Evolve -checkpoint myCheckpointFile.gz

Checkpointing will be discussed in Section 2.3.

Last but not least, if you forget this stuff, you can always type this to get some reminders:

java ec.Evolve -help

The purpose of ec.Evolve is to construct an ec.EvolutionState instance, or load one from a checkpoint file;

then get it running; and finally clean up. The ec.EvolutionState class actually performs the evolutionary

process. Most of the stuff ec.EvolutionState holds is associated with evolutionary algorithms or other

stochastic optimization procedures. However there are certain important utility objects or data which are

created by ec.Evolve prior to creating the ec.EvolutionState, and are then stored into ec.EvolutionState after it

has been constructed. These objects are:

•

The

Parameter Database

, which holds all the parameters ec.EvolutionState uses to build and run the

process.

• The Output, which handles logging and writing to files.

• The Checkpointing Facility to create checkpoint files as the process continues.

• The Number of Threads to use, and the Random Number Generators, one per thread.

• A simple declaration of the Number of Jobs to run in the process.

The remainder Section 2 discusses each of these items. It’s not the most exciting of topics: but it’s

important in order to understand the rest of the ECJ process.

2.1 The Parameter Database

To build and run an experiment in ECJ, you typically write three things:

• (In Java) A problem which evaluates individuals and assigns fitness values to them.

•

(In Java) Depending on the kind of experiment, various

components

from which individuals can be

constructed — for example, for a genetic programming experiment, you’ll need to define the kinds of

nodes which can be used to make up the individual’s tree.

•

(In one or more Parameter Files) Various

parameters

which define the kind of algorithm you are using,

the nature of the experiment, and the makeup of your populations and processes.

Let’s begin with the third item. Parameters are the lifeblood of ECJ: practically everything in the system

is defined by them. This makes ECJ highly flexible; but it also adds complexity to the system.

ECJ loads parameter files and stores them into the ec.util.ParameterDatabase object, which is available

to nearly everything. Parameter files are an extension of the files used by Java’s old

java.util.PropertyList

object. Parameter files usually end in

".params"

, and contain parameters one to a line. Parameter files may

also contain blank (all whitespace) lines, which are ignored, and also lines which start with

"#"

, which are

considered comments and also ignored. An example comment:

# This is a comment

The parameter lines in a parameter file typically look like this:

parameter.name =parameter value

18

A

parameter name

is a string of non-whitespace characters except for

"="

. After this comes some optional

whitespace, then an

"="

, then some more optional whitespace.

1

A

parameter value

is a string of characters,

including whitespace, except that all whitespace is trimmed from the front and end of the string. Notice the

use of a period the parameter name. It’s quite a common convention to use periods in various parameter

names in ECJ. We’ll get to why in a second.

Here are some legal parameter lines:

generations = 400

pop.subpop.0.size =1000

pop.subpop= ec.Subpopulation

Here are some illegal parameter lines:

generations

= 1000

pop subpop = ec.Subpopulation

2.1.1 Inheritance

Parameter files may be set up to

derive from

one or more other parameter files. Let’s say you have two

parameter files, a.params and b.params. Both are located in the same directory. You can set up a.params to

derive from b.params by adding the following line as the very first line in the a.params file:

parent.0 = b.params

This says, in effect: “include in me all the parameters found in the b.params file, but any parameters I

myself declare will override any parameters of the same name in the b.params file.” Note that b.params may

itself derive from some other file (say, c.params). In this case, a.params receives parameters from both (and

parameters in b.params will likewise override ones of the same name in c.params).

Let’s say that b.params is located inside a subdirectory called foo. Then the line will look like this:

parent.0 = foo/b.params

Notice the forward slash: ECJ was designed on UNIX systems. Likewise, imagine if b.params was stored

in a sibling directory called bar: then we might say:

parent.0 = ../bar/b.params

You can also define absolute paths, UNIX-style:

parent.0 = /tmp/myproject/foo.params

Long story short: parameter files are declared using traditional UNIX path syntax.

A parameter file can also derive from multiple parent parameter files, by including each at the beginning

of the file, with consecutive numbers, like this:

parent.0 = b.params

parent.1 = yo/d.params

parent.2 = ../z.params

This says in effect: “first look in a.params for the parameter. If you can’t find it there, look in b.params and,

ultimately, all the files b.params derives from. If you can’t find it in any of them, look in d.params and all the

1Actually, you can omit the "=", but it’s considered bad style.

19

files it derives from. If you can’t find it in any of them, look in z.params and all the files it derives from. If

you’ve still not found the parameter, give up.”

This is essentially a depth-first search through a tree or DAG, with parents overriding their children

(the files they derive from) and earlier siblings overriding later siblings. Note that this multiple inheritance

scheme is not the same as C++ or Lisp/CLOS, which use a distance measure!

Parent parameter files can be explicit files on your file system (as shown above) or they can be files located

in JAR files etc. But how do you refer to a file inside a JAR file? It’s easy: refer to it using a

class relative

path

(see the next Section, 2.1.2), which defines the path relative to the class file of some class. For example,

suppose you’re creating a parameter file whose parent is ec/app/ant/ant.params. But you’re not using ECJ in

its unpacked form, but rather bundled up into a JAR file. Thus ec/app/ant/ant.params is archived in that JAR

file. Since this file is right next to ec/app/ant/Ant.class — the class file for the ec.app.ant.Ant class– you can

refer to it as:

parent.0 = @ec.app.ant.Ant ant.params

If your parameter file is already in a JAR file, and it uses ordinary relative path names to refer to its

parents (like ../z.params), these will be interpreted as other files in the archived file system inside that JAR

file. To escape the JAR file you have to use an absolute path name, such as

parent.0 = /tmp/foo.params

It’s pretty rare to need that though, and hardly good style. The whole point of JAR files is to encapsulate

functionality into one package.

Overriding the Parameter File

When you fire up ECJ, you point it at a single parameter file, and you can

provide additional parameters at the command-line, like this:

java ec.Evolve -file parameterFile.params -p command-line-parameter=value \

-p command-line-parameter=value ...

Furthermore, your program itself can submit parameters to the parameter database, though it’s very

unusual to do so. When a parameter is requested from the parameter database, here’s how it’s looked up:

1. If the parameter was declared by the program itself, this value is returned.

2. Else if the parameter was provided on the command line, this value is returned.

3.

Else the parameter is looked up in the provided parameter file and all derived files using the inheritance

ordering described earlier.

4. Else the database signals failure.

2.1.2 Kinds of Parameters

ECJ supports the following kinds of parameters:

•Numbers. Either long integers or double floating-point values. Examples:

generations = 500

tournament.size = 3.25

minimum-fitness = -23.45e15

•Arbitrary Strings trimmed of whitespace. Example:

crossover-type = two-point

20

•Booleans

. Any value except for

"false"

(case-insensitive) is considered to be true. It’s best style to use

lower-case "true" and "false". The first two of these examples are false and the second two are true:

print-params = false

die-a-painful-death = fAlSe

pop.subpop.0.perform-injections = true

quit-on-run-complete = whatever

•Class Names

. Class names are defined as the full class name of the class, including the package.

Example:

pop.subpop.0.species = ec.gp.GPSpecies

•File or Resource Path Names. Paths can be of four types.

– Absolute paths

, which (in UNIX) begin with a

"/"

, stipulate a precise location in the file system.

– Relative paths

, which do not begin with a

"/"

, are defined relative to the parameter file in which

the parameter was located. If the parameter file was an actual file in the filesystem, the relative

path will also be considered to point to a file. If the parameter file was in a jar file, then the relative

path will be considered to point to a resource inside the same jar file relative to the parameter file

location. You’ve seen relative paths already used for derived parameter files.

– Execution relative paths

are defined relative to the directory in which the ECJ process was

launched. Execution relative paths look exactly like relative paths except that they begin with the

special character "$".

– Class relative paths

define a path relative to the class file of a class. They have two parts: the

class in question, and then the path to the resource relative to it. If the class is stored in a Jar file,

then the path to the resource will also be within that Jar file. Otherwise the path will point to an

actual file. Class relative paths begin with

"@"

, followed by the full class name, then spaces or

tabs, then the relative path.

Examples of all four kinds of paths:

stat.file = $out.stat

eval.prob.map-file = ../dungeon.map

temporary-output-file = /tmp/output.txt

image = @ec.app.myapp.MyClass images/picture.png

If the parameter is for a file meant to be opened

read-only

, any of the four approaches above will

work fine. But if the parameter is for a

writable file

, then you have an issue. As discussed in Section

2.1.4, the parameter in question could refer to a file in your operating system, or it could refer to a file

bundled inside a Java jar file. In this second case, the file cannot be written to.

The only kinds of paths which can refer to things inside jar files are relative paths and class-relative

paths. Thus if you stick with absolute paths or execution-relative paths, you know you’ll be referring

to a writable file. For this reason we recommend:

– Read-only Files

should use class-relative paths or relative paths, and should only be accessed

using getResource(...) (see Section 2.1.5).

– Read-Write Files

should use absolute paths or (in most cases) execution-relative paths, and

should only be accessed using getFile(...) (see Section 2.1.5).

•Arrays. ECJ supports loading arrays of doubles,2but does not have direct support for loading arrays

of other types. However it has a convention you should be made aware of. It’s common for arrays to

2See the various “getDoubles” methods in Section 2.1.5.

21

be loaded by first stipulating the number of elements in the array, then stipulating each array element

in turn, starting with 0. The parameter used for the number of elements differs from case to case. Note

the use of periods prior to each number in the following example:

gp.fs.0.size = 6

gp.fs.0.func.0 = ec.app.ant.func.Left

gp.fs.0.func.1 = ec.app.ant.func.Right

gp.fs.0.func.2 = ec.app.ant.func.Move

gp.fs.0.func.3 = ec.app.ant.func.IfFoodAhead

gp.fs.0.func.4 = ec.app.ant.func.Progn2

gp.fs.0.func.5 = ec.app.ant.func.Progn3

The particulars vary. Here’s another, slightly different, example:

exch.num-islands = 8

exch.island.0.id = SurvivorIsland

exch.island.1.id = GilligansIsland

exch.island.2.id = FantasyIsland

exch.island.3.id = TemptationIsland

exch.island.4.id = RhodeIsland

exch.island.5.id = EllisIsland

exch.island.6.id = ConeyIsland

exch.island.7.id = TreasureIsland

Anyway, you get the idea.

2.1.3 Namespace Hierarchies and Parameter Bases

ECJ has lots of parameters, and by convention organizes them in a namespace hierarchy to maintain some

sense of order. The delimiter for paths in this hierarchy is — you guessed it — the period.

The vast majority of parameters are used by one Java object or another to set itself up immediately after it

has been instantiated for the first time. ECJ has an important convention which uses the namespace hierarchy

to do just this: the

parameter base

. A parameter base is essentially a path (or namespace, what have you) in

which an object expects to find all of its parameters. The prefix for this path is typically the parameter name

by which the object itself was loaded.

For example, let us consider the process of defining the class to be used for the global population. This

class is found in the following parameter:

pop = ec.Population

ECJ looks for this parameter, expects a class (in this case, ec.Population), loads the class, and creates one

instance. It then calls a special method (setup(...), we’ll discuss it later) on this class so it can set itself up

from various parameters. In this case, ec.Population needs to know how many subpopulations it will have.

This is defined by the following parameter:

pop.subpops = 2

ec.Population didn’t know that it was supposed to look in

pop.subpops

for this value. Instead, it only

knew that it needed to look in a parameter called

subpops

. The rest (in this case,

pop

) was provided

to ec.Population as its parameter base: the text to be prepended — plus a period — to all parameters that

ec.Population needed to set itself up. It’s not a coincidence that the parameter base also happened to be the

very parameter which defined ec.Population in the first place. This is by convention.

Armed with the fact that it needs to create an array of two subpopulations, ec.Population is ready to load

the classes for those two subpopulations. Let’s say that for our experiment we want them to be of different

classes. Here they are:

22

pop.subpop.0 = ec.Subpopulation

pop.subpop.1 = ec.app.myapp.MySpecialSubpopulation

The two classes are loaded and one instance is created of each of them. Then setup(...) is called on each of

them. Each subpopulation looks for a parameter called

size

to tell it how may individuals will be in that

subpopulation. Since each of them is provided with a different parameter base, they can have different sizes:

pop.subpop.0.size = 100

pop.subpop.1.size = 512

Likewise, each of these subpopulations needs a “species”. Presuming that the species are different classes,

we might have:

pop.subpop.0.species = ec.vector.VectorSpecies

pop.subpop.1.species = ec.gp.GPSpecies

These species objects themselves need to be set up, and when they do, their parameter bases will be

pop.subpop.0.species and pop.subpop.1.species respectively. And so on.

Now imagine that we have ten subpopulations, all of the same class (ec.Subpopulation), and all but the first

one has the exact same size. We’d wind up having to write silly stuff like this:

pop.subpop.0.size = 1000

pop.subpop.1.size = 500

pop.subpop.2.size = 500

pop.subpop.3.size = 500

pop.subpop.4.size = 500

pop.subpop.5.size = 500

pop.subpop.6.size = 500

pop.subpop.7.size = 500

pop.subpop.8.size = 500

pop.subpop.9.size = 500

That’s a lot of typing. Though I am saddened to report that ECJ’s parameter files do require a lot of typing,

at least the parameter database facility offers an option to save our fingers somewhat in this case. Specifically,

when the ec.Subpopulation class sets itself up each time, it actually looks in not one but two path locations for

the

size

parameter: first it tacks on its current base (as above), and if there’s no parameter at that location,

then it tries tacking on a

default base

defined for its class. In this case, the default base for ec.Subpopulation

is the prefix ec.subpop. Armed with this we could simply write:

ec.subpop.size = 500

pop.subpop.0.size = 1000

When ECJ looks for subpopulation 0’s size, it’ll find it as normal (1000). But when it looks for subpopula-

tion 1 (etc.), it won’t find a size parameter in the normal location, so it’ll look in the default location, and use

what it finds there (500). Only if there’s no parameter to be found in either location will ECJ signal an error.

It’s important to note that if a class is loaded from a default parameter, this doesn’t mean that the default

parameter will become its parameter base: rather, the original expected location will continue to be the base.

For example, imagine if both of our Species objects were the same class, and we had defined them using the

default base. That is, instead of

pop.subpop.0.species = ec.vector.VectorSpecies

pop.subpop.1.species = ec.vector.VectorSpecies

...we simply said

23

ec.subpop.species = ec.vector.VectorSpecies

When the species for subpopulation 0 is loaded, its parameter base is not going to be

ec.subpop.species

.

Instead, it will still be

pop.subpop.0.species

. Likewise, the parameter base for the species of subpopulation

1 will still be pop.subpop.1.species.

Keep in mind that all of this is just a convention. You can use periods for whatever you like ultimately.

And there exist a few global parameters without any base at all. For example, the number of generations is

defined as

generations = 200

...and the seed for the random number generator the fourth thread is

seed.3 = 12303421

...even though there is no object set up with the

seed

parameter, and hence no object has

seed

as its parameter

base. Random number generators are one of the few rare objects in ECJ which are not specified from the

parameter file.

2.1.4 Parameter Files in Jar Files

Parameter files don’t have to be just in your file system: they can be bundled up in jar files. If a parameter

file is being read from a jar file, its parents will be generally assumed to be from the same jar file as well if

they’re relative paths (they don’t start with "’/’" in UNIX).

So how do you point to a parameter file in a jar file to get things rolling? You can run ECJ like this:

java ec.Evolve -from parameterFile.params -at relative.class.Name ...

This instructs ECJ to look for the .class file of the class relative.class.Name, be it in the file system or in a Jar

file. Once ECJ has found it, it looks for the path parameterFile.params relative to this file. You can omit the

classname, which causes ECJ to assume that the class in question is ec.Evolve. For example, to run the Ant

demo from ECJ (in a Jar file or unpacked into the file system), you could say:

java ec.Evolve -from app/ant/ant.params

Notice it does not say ec/app/ant/ant.params, which is probably what you’d expect if you used

"-file"

rather than

"-from"

. This is because ECJ goes to the ec/Evolve.class file, then from there it searches for the

parameter file. The path of the parameter file relative to the ec/Evolve.class file is app/ant/ant.params.

There are similar rules regarding file references (such as parent references) within a parameter file. Let’s

say that your parameter file is inside a jar file. If you say something like:

parent.0 = ../path/to/the/parent.params

... then ECJ will look around inside the same Jar file for this file, rather than externally in the operating

system’s file system or in some other Jar file.

You can escape this however. For example, once your parameter file is inside a Jar file, you can still define

a parent in another Jar file, or in the file system, if you know a another class file it’s located relative to. You

just need to specify another class for ECJ to start at, and a path relative to it, like this:

parent.0 = @ec.foo.AnotherClass relative/path/to/the/parent.params

See the next section for more explanation of that text format.

Last but not least, once your parameter file is in a Jar file, you can refer to a parent in the file system if

you use an absolute path (that is, one which (in UNIX anyway) starts with "’/’"). For example:

24

parent.0 = /Users/sean/myexperiment/other.params

Absolute path names aren’t very portable and aren’t recommended.

2.1.5 Accessing Parameters

Parameters are looked up in the ec.util.ParameterDatabase class, and parameter names are specified using

the ec.Parameter class. The latter is little more than a cover for Java strings. To create the parameter

pop.subpop.0.size, we say:

Parameter param = new Parameter("pop.subpop.0.size");

Of course, usually we don’t want to just make a direct parameter, but rather want to construct one from a

parameter base and the remainder. Let’s say our base (

pop.subpop.0

) is stored in the variable base, and we

want to look for size. We do this as:

Parameter param = base.push("size");

Here are some common ec.util.ParameterDatabase methods. Note that all of them look in two places to

find a parameter value. This is what we use to handle “standard” and “default” bases. Typically you’d pass

in the parameter in its standard location, and also (in the “default parameter”) parameter with its default

base configuration. You can pass in null for either, and it’ll get ignored.

ec.util.ParameterDatabase Methods

public boolean exists(Parameter parameter, Parameter default)

If either parameter exists in the database, return true. Either parameter may be null.

public String getString(Parameter parameter, Parameter default)

Look first in parameter, then failing that, in default parameter, and return the result as a String, else null if not found.

Either parameter may be null.

public File getFile(Parameter parameter, Parameter default)

Look first in parameter, then failing that, in default parameter, and return the result as a File, else null if not found.

Either parameter may be null.

Important Note.

You should generally only use this method if you are writing to a

file. Otherwise it’s best if you used getResource(...).

public InputStream getResource(Parameter parameter, Parameter default)

Look first in parameter, then failing that, in default parameter, and open an InputStream to the result, else null if not

found. Either parameter may be null.

Important Note.

This is distinguished from getFile(...) in that the object

doesn’t have to be a file in the file system: it can for example be a location in a jar file. If the parameter specifies an

absolute path or an execution relative path, then a file in the file system will be opened. If the parameter specifies

a relative path, and the parameter database was itself loaded as a file rather than a resource (in a jar file say), then

a file will be opened, else a resource will be opened in the same jar file as the parameter file. You can also specify a

resource path directly.

public Object getInstanceForParameterEq(Parameter parameter, Parameter default, Class superclass)

Look first in parameter, then failing that, in default parameter, to find a class. The class must have superclass as a

superclass, or can be the superclass itself. Instantiate one instance of the class using the default (no-argument)

constructor, and return the instance. Throws an ec.util.ParamClassLoadException if no class is found.

public Object getInstanceForParameter(Parameter parameter, Parameter default, Class superclass)

Look first in parameter, then failing that, in default parameter, to find a class. The class must have superclass as a

superclass, but may not be superclass itself. Instantiate one instance of the class using the default (no-argument)

constructor, and return the instance. Throws an ec.util.ParamClassLoadException if no class is found.

25

public int getBoolean(Parameter parameter, Parameter default, double defaultValue)

Look first in parameter, then failing that, in default parameter, and return the result as a boolean, else defaultValue if

not found or not a boolean. Either parameter may be null.

public int getIntWithDefault(Parameter parameter, Parameter default, int defaultValue)

Look first in parameter, then failing that, in default parameter, and return the result as an int, else defaultValue if not

found or not an int. Either parameter may be null.

public int getInt(Parameter parameter, Parameter default, int minValue)

Look first in parameter, then failing that, in default parameter, and return the result as an int, else minValue

−

1 if not

found, not an int, or <minValue. Either parameter may be null.

public int getIntWithMax(Parameter parameter, Parameter default, int minValue, int maxValue)

Look first in parameter, then failing that, in default parameter, and return the result as an int, else minValue

−

1 if not

found, not an int, <minValue, or >maxValue. Either parameter may be null.

public long getLongWithDefault(Parameter parameter, Parameter default, long defaultValue)

Look first in parameter, then failing that, in default parameter, and return the result as a long, else defaultValue if not