Manual

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 82

- 1 Presentation

- 2 About N2D2-IP

- 3 Performing simulations

- 4 INI file interface

- 4.1 Syntax

- 4.2 Template inclusion syntax

- 4.3 Global parameters

- 4.4 Databases

- 4.5 Stimuli data analysis

- 4.6 Environment

- 4.6.1 Built-in transformations

- AffineTransformation

- ApodizationTransformation

- ChannelExtractionTransformation

- ColorSpaceTransformation

- DFTTransformation

- DistortionTransformation

- EqualizeTransformation

- ExpandLabelTransformation

- FilterTransformation

- FlipTransformation

- GradientFilterTransformation

- LabelSliceExtractionTransformation

- MagnitudePhaseTransformation

- MorphologicalReconstructionTransformation

- MorphologyTransformation

- NormalizeTransformation

- PadCropTransformation

- RandomAffineTransformation

- RangeAffineTransformation

- RangeClippingTransformation

- RescaleTransformation

- ReshapeTransformation

- SliceExtractionTransformation

- ThresholdTransformation

- TrimTransformation

- WallisFilterTransformation

- 4.6.1 Built-in transformations

- 4.7 Network layers

- 4.7.1 Layer definition

- 4.7.2 Weight fillers

- 4.7.3 Weight solvers

- 4.7.4 Activation functions

- 4.7.5 Anchor

- 4.7.6 Conv

- 4.7.7 Deconv

- 4.7.8 Pool

- 4.7.9 Unpool

- 4.7.10 ElemWise

- 4.7.11 FMP

- 4.7.12 Fc

- 4.7.13 Rbf

- 4.7.14 Softmax

- 4.7.15 LRN

- 4.7.16 LSTM

- 4.7.17 Dropout

- 4.7.18 Padding

- 4.7.19 Resize

- 4.7.20 BatchNorm

- 4.7.21 Transformation

- 5 Tutorials

Commissariat à l’Energie Atomique et aux Energies Alternatives Département Architecture Conception et Logiciels Embarqués

Institut List | CEA Saclay Nano-INNOV | Bât. 861-PC142

91191 Gif-sur-Yvette Cedex - FRANCE

Tel. : +33 (0)1.69.08.49.67 | Fax : +33(0)1.69.08.83.95

www-list.cea.fr

Établissement Public à caractère Industriel et Commercial | RCS Paris B 775 685 019

Neural Network Design & Deployment

Olivier Bichler, David Briand, Victor Gacoin,

Benjamin Bertelone, Thibault Allenet, Johannes C. Thiele

Wednesday 20th February, 2019

Contents

1 Presentation 6

1.1 Databasehandling .................................... 6

1.2 Datapre-processing ................................... 6

1.3 Deepnetworkbuilding.................................. 7

1.4 Performancesevaluation................................. 8

1.5 Hardwareexports..................................... 8

1.6 Summary ......................................... 10

2 About N2D2-IP 11

3 Performing simulations 11

3.1 Obtaining the latest version of this manual . . . . . . . . . . . . . . . . . . . . . . 11

3.2 Minimum system requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

3.3 ObtainingN2D2 ..................................... 12

3.3.1 Prerequisites ................................... 12

Red Hat Enterprise Linux (RHEL) 6 . . . . . . . . . . . . . . . . . . . . . . 12

Ubuntu ...................................... 12

Windows ..................................... 13

3.3.2 Gettingthesources................................ 13

3.3.3 Compilation.................................... 13

3.4 Downloading training datasets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

3.5 Runthelearning ..................................... 14

3.6 Testalearnednetwork.................................. 14

3.6.1 Interpreting the results . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

Recognitionrate ................................. 14

Confusionmatrix................................. 14

Memory and computation requirements . . . . . . . . . . . . . . . . . . . . 14

Kernels and weights distribution . . . . . . . . . . . . . . . . . . . . . . . . 14

Outputmapsactivity .............................. 15

3.7 Exportalearnednetwork ................................ 15

3.7.1 CexportN2D2 IP only ...................................... 17

3.7.2 CPP_OpenCL exportN2D2 IP only ................................ 18

3.7.3 CPP_TensorRT export .............................. 19

3.7.4 CPP_cuDNN export ................................ 20

3.7.5 C_HLS exportN2D2 IP only ................................... 20

4 INI file interface 21

4.1 Syntax........................................... 21

4.1.1 Properties..................................... 21

4.1.2 Sections...................................... 21

4.1.3 Casesensitivity.................................. 21

4.1.4 Comments..................................... 21

4.1.5 Quotedvalues................................... 21

4.1.6 Whitespace .................................... 21

4.1.7 Escapecharacters ................................ 21

4.2 Template inclusion syntax . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

4.2.1 Variable substitution . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

4.2.2 Controlstatements................................ 22

block........................................ 23

for......................................... 23

2/82

if.......................................... 23

include ...................................... 23

4.3 Globalparameters .................................... 23

4.4 Databases......................................... 23

4.4.1 MNIST ...................................... 23

4.4.2 GTSRB ...................................... 23

4.4.3 Directory ..................................... 24

4.4.4 Other built-in databases . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

CIFAR10_Database ................................ 26

CIFAR100_Database ............................... 26

CKP_Database .................................. 26

Caltech101_DIR_Database ........................... 26

Caltech256_DIR_Database ........................... 26

CaltechPedestrian_Database ......................... 27

Cityscapes_Database .............................. 27

Daimler_Database ................................ 27

DOTA_Database .................................. 28

FDDB_Database .................................. 28

GTSDB_DIR_Database .............................. 28

ILSVRC2012_Database .............................. 28

KITTI_Database ................................. 28

KITTI_Road_Database .............................. 29

KITTI_Object_Database ............................ 29

LITISRouen_Database .............................. 29

4.4.5 Dataset images slicing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

4.5 Stimulidataanalysis................................... 30

4.5.1 Zero-mean and unity standard deviation normalization . . . . . . . . . . . . 30

4.5.2 Substracting the mean image of the set . . . . . . . . . . . . . . . . . . . . 30

4.6 Environment ....................................... 32

4.6.1 Built-in transformations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 33

AffineTransformation ............................. 34

ApodizationTransformation .......................... 34

ChannelExtractionTransformation ...................... 35

ColorSpaceTransformation .......................... 35

DFTTransformation ............................... 36

DistortionTransformationN2D2 IP only .......................... 36

EqualizeTransformationN2D2 IP only ............................ 36

ExpandLabelTransformationN2D2 IP only .......................... 37

FilterTransformation ............................. 37

FlipTransformation .............................. 38

GradientFilterTransformationN2D2 IP only ........................ 38

LabelSliceExtractionTransformationN2D2 IP only .................... 38

MagnitudePhaseTransformation ........................ 39

MorphologicalReconstructionTransformationN2D2 IP only ............... 40

MorphologyTransformationN2D2 IP only .......................... 40

NormalizeTransformation ........................... 41

PadCropTransformation ............................ 41

RandomAffineTransformationN2D2 IP only ......................... 41

RangeAffineTransformation .......................... 43

RangeClippingTransformationN2D2 IP only ........................ 43

RescaleTransformation ............................ 43

ReshapeTransformation ............................ 43

3/82

SliceExtractionTransformationN2D2 IP only ....................... 43

ThresholdTransformation ........................... 44

TrimTransformation .............................. 44

WallisFilterTransformationN2D2 IP only ......................... 44

4.7 Networklayers ...................................... 44

4.7.1 Layerdefinition.................................. 44

4.7.2 Weightfillers ................................... 45

ConstantFiller ................................. 46

HeFiller ..................................... 46

NormalFiller .................................. 46

UniformFiller .................................. 46

XavierFiller .................................. 46

4.7.3 Weightsolvers .................................. 47

SGDSolver_Frame ................................ 47

SGDSolver_Frame_CUDA ............................. 47

AdamSolver_Frame ................................ 48

AdamSolver_Frame_CUDA ............................ 48

4.7.4 Activationfunctions ............................... 48

Logistic ..................................... 48

LogisticWithLoss ................................ 48

Rectifier .................................... 49

Saturation .................................... 49

Softplus ..................................... 49

Tanh ........................................ 49

TanhLeCun .................................... 49

4.7.5 Anchor ...................................... 49

Configuration parameters (Frame models)................... 49

Outputsremapping ............................... 50

4.7.6 Conv ........................................ 51

Configuration parameters (Frame models)................... 53

Configuration parameters (Spike models) ................... 53

4.7.7 Deconv ...................................... 54

Configuration parameters (Frame models)................... 56

4.7.8 Pool ........................................ 56

Maxoutexample ................................. 56

Configuration parameters (Spike models) ................... 58

4.7.9 Unpool ...................................... 58

4.7.10 ElemWise ..................................... 60

Sum operation................................... 60

AbsSum operation................................. 60

EuclideanSum operation............................. 60

Prod operation .................................. 60

Max operation................................... 60

Examples ..................................... 60

4.7.11 FMP ........................................ 61

Configuration parameters (Frame models)................... 61

4.7.12 Fc ......................................... 61

Configuration parameters (Frame models)................... 61

Configuration parameters (Spike models) ................... 62

4.7.13 RbfN2D2 IP only ........................................ 63

Configuration parameters (Frame models)................... 63

4.7.14 Softmax ...................................... 63

4/82

4.7.15 LRN ........................................ 64

Configuration parameters (Frame models)................... 64

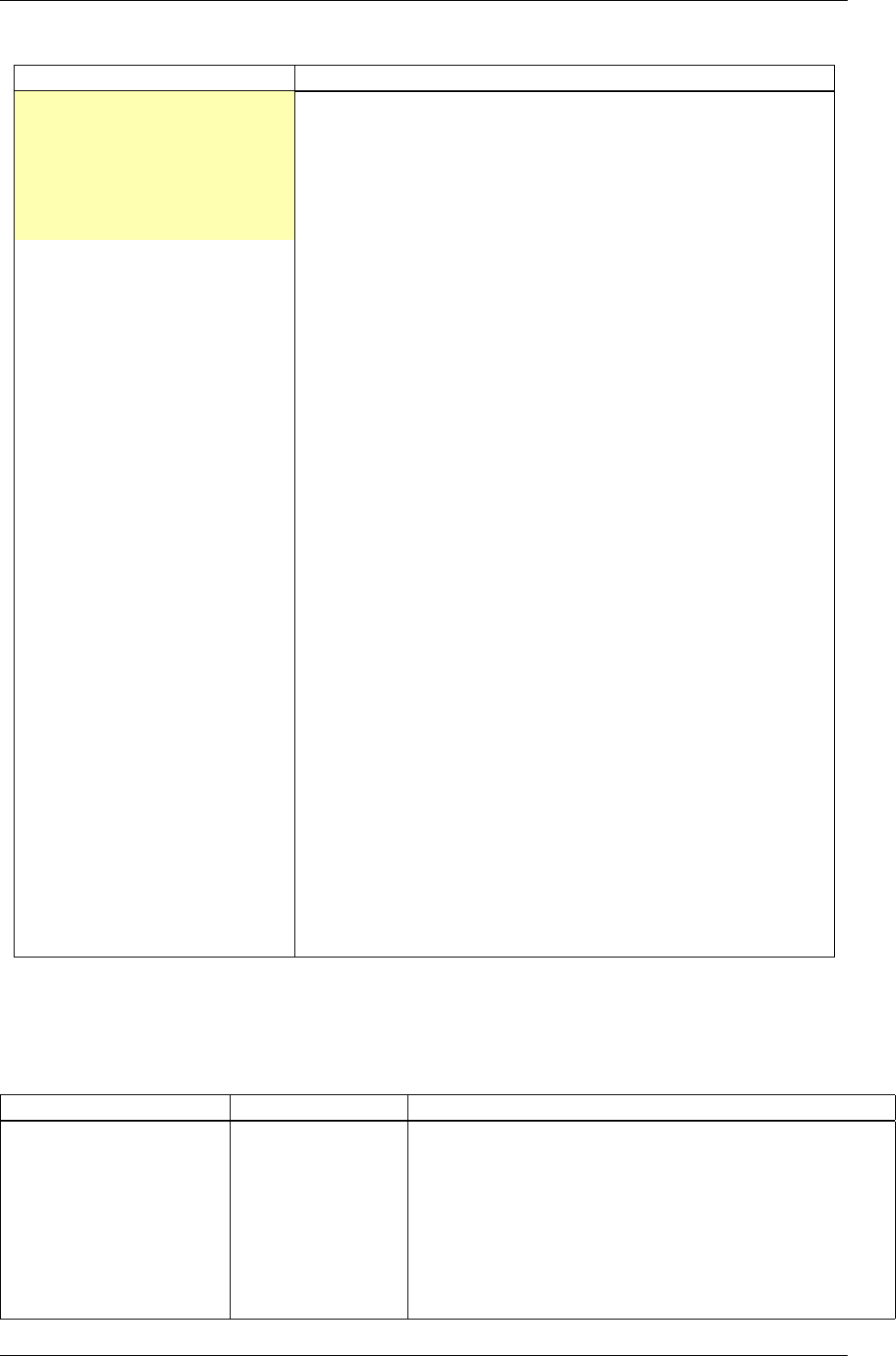

4.7.16 LSTM ....................................... 64

Global layer parameters (Frame_CUDA models) ............... 64

Configuration parameters (Frame_CUDA models) .............. 65

Currentrestrictions ............................... 66

Further development requirements . . . . . . . . . . . . . . . . . . . . . . . 66

Developmentguidance.............................. 66

4.7.17 Dropout ...................................... 67

Configuration parameters (Frame models)................... 67

4.7.18 Padding ...................................... 67

4.7.19 Resize ...................................... 67

Configuration parameters . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

4.7.20 BatchNorm .................................... 68

Configuration parameters (Frame models)................... 68

4.7.21 Transformation ................................. 68

5 Tutorials 70

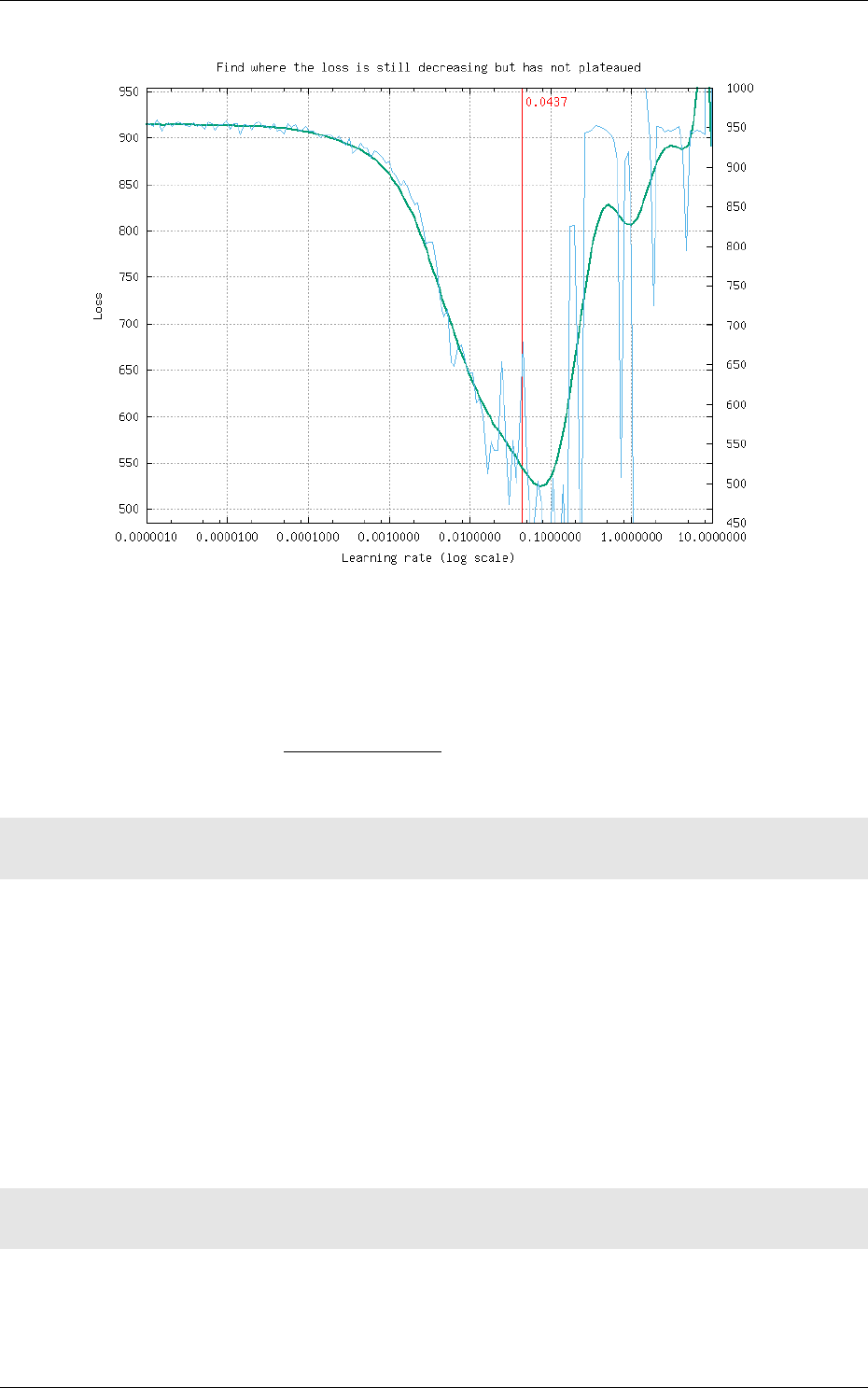

5.1 Learning deep neural networks: tips and tricks . . . . . . . . . . . . . . . . . . . . 70

5.1.1 Choose the learning solver . . . . . . . . . . . . . . . . . . . . . . . . . . . . 70

5.1.2 Choose the learning hyper-parameters . . . . . . . . . . . . . . . . . . . . . 70

5.1.3 Convergence and normalization . . . . . . . . . . . . . . . . . . . . . . . . . 71

5.2 Building a classifier neural network . . . . . . . . . . . . . . . . . . . . . . . . . . . 71

5.3 Building a segmentation neural network . . . . . . . . . . . . . . . . . . . . . . . . 74

5.3.1 Facesdetection.................................. 76

5.3.2 Genderrecognition................................ 76

5.3.3 ROIsextraction.................................. 77

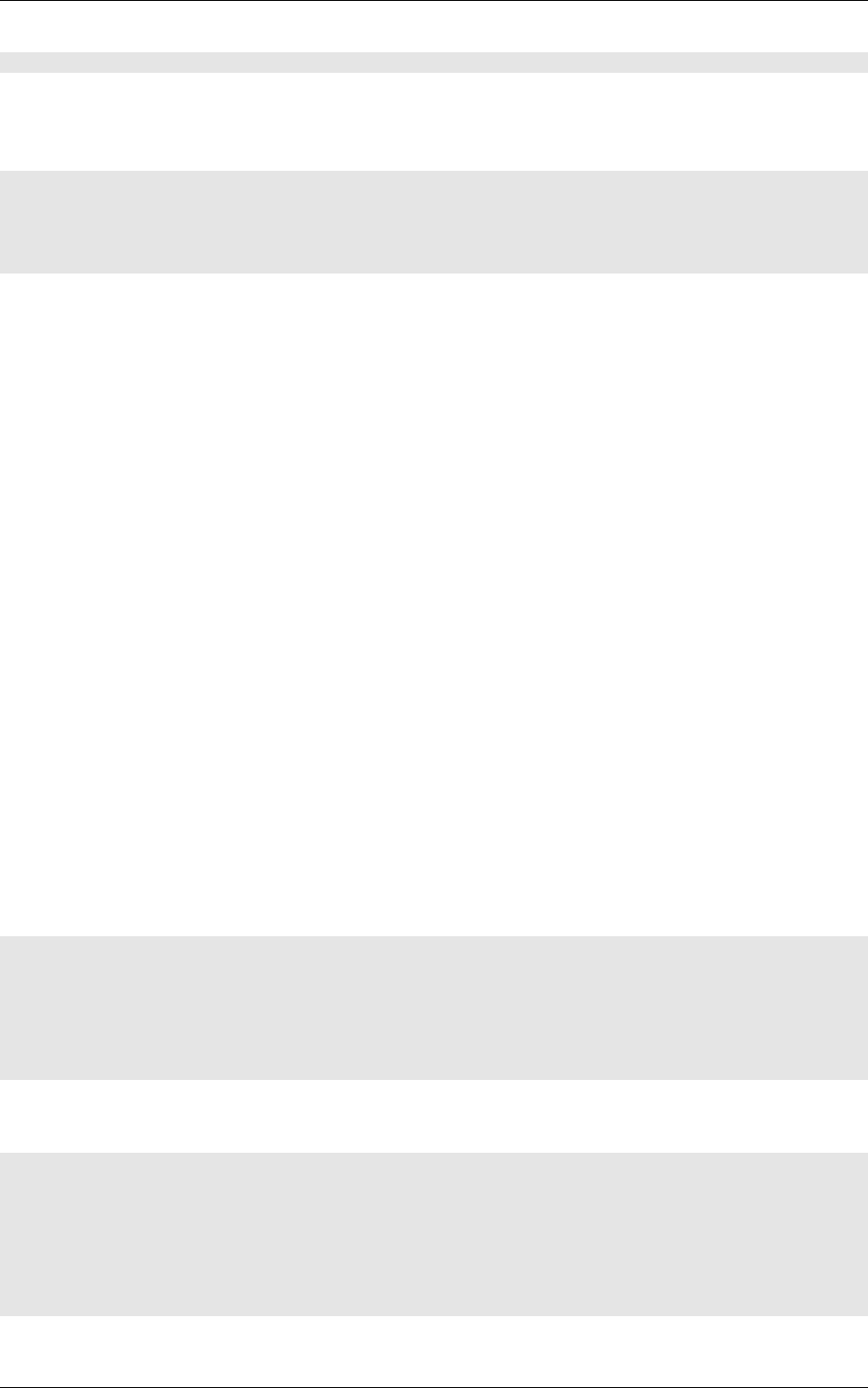

5.3.4 Datavisualization ................................ 78

5.4 Transcoding a learned network in spike-coding . . . . . . . . . . . . . . . . . . . . 78

5.4.1 Render the network compatible with spike simulations . . . . . . . . . . . . 78

5.4.2 Configure spike-coding parameters . . . . . . . . . . . . . . . . . . . . . . . 79

5/82

1 Presentation

The N2D2 platform is a comprehensive solution for fast and accurate Deep Neural Network (DNN)

simulation and full and automated DNN-based applications building. The platform integrates

database construction, data pre-processing, network building, benchmarking and hardware export

to various targets. It is particularly useful for DNN design and exploration, allowing simple and fast

prototyping of DNN with different topologies. It is possible to define and learn multiple network

topology variations and compare the performances (in terms of recognition rate and computationnal

cost) automatically. Export targets include CPU, DSP and GPU with OpenMP, OpenCL, Cuda,

cuDNN and TensorRT programming models as well as custom hardware IP code generation with

High-Level Synthesis for FPGA and dedicated configurable DNN accelerator IP1.

In the following, the first section describes the database handling capabilities of the tool,

which can automatically generate learning, validation and testing data sets from any hand made

database (for example from simple files directories). The second section briefly describes the data

pre-processing capabilites built-in the tool, which does not require any external pre-processing

step and can handle many data transformation, normalization and augmentation (for example

using elastic distortion to improve the learning). The third section show an example of DNN

building using a simple INI text configuration file. The fourth section show some examples of

metrics obtained after the learning and testing to evaluate the performances of the learned DNN.

Next, the fifth section introduces the DNN hardware export capabilities of the toolflow, which can

automatically generate ready to use code for various targets such as embedded GPUs or full custom

dedicated FPGA IP. Finally, we conclude by summarising the main features of the tool.

1.1 Database handling

The tool integrates everything needed to handle custom or hand made databases:

•Genericity: load image and sound, 1D, 2D or 3D data;

•

Associate a label for each data point (useful for scene labeling for example) or a single label

to each data file (one object/class per image for example), 1D or 2D labels;

•Advanced Region of Interest (ROI) handling:

Support arbitrary ROI shapes (circular, rectangular, polygonal or pixelwise defined);

Convert ROIs to data point (pixelwise) labels;

Extract one or multiple ROIs from an initial dataset to create as many corresponding

additional data to feed the DNN;

•

Native support of file directory-based databases, where each sub-directory represents a

different label. Most used image file formats are supported (JPEG, PNG, PGM...);

•Possibility to add custom datafile format in the tool without any change in the code base;

•Automatic random partitionning of the database into learning, validation and testing sets.

1.2 Data pre-processing

Data pre-processing, such as image rescaling, normalization, filtering... is directly integrated into

the toolflow, with no need for external tool or pre-processing. Each pre-processing step is called a

transformation.

The full sequence of transformations can be specified easily in a INI text configuration file. For

example:

; First step: convert the image to grayscale

[env.Transformation-1]

Type=ChannelExtractionTransformation

CSChannel=Gray

1Ongoing work

6/82

; Second step: rescale the image to a 29x29 size

[env.Transformation-2]

Type=RescaleTransformation

Width=29

Height=29

; Third step: apply histogram equalization to the image

[env.Transformation-3]

Type=EqualizeTransformation

; Fourth step (only during learning): apply random elastic distortions to the images to extent the

learning set

[env.OnTheFlyTransformation]

Type=DistortionTransformation

ApplyTo=LearnOnly

ElasticGaussianSize=21

ElasticSigma=6.0

ElasticScaling=20.0

Scaling=15.0

Rotation=15.0

Example of pre-processing transformations built-in in the tool are:

•Image color space change and color channel extraction;

•Elastic distortion;

•Histogram equalization (including CLAHE);

•

Convolutional filtering of the image with custom or pre-defined kernels (Gaussian, Gabor...);

•(Random) image flipping;

•(Random) extraction of fixed-size slices in a given label (for multi-label images)

•Normalization;

•Rescaling, padding/cropping, triming;

•Image data range clipping;

•(Random) extraction of fixed-size slices.

1.3 Deep network building

The building of a deep network is straightforward and can be done withing the same INI configuration

file. Several layer types are available: convolutional, pooling, fully connected, Radial-basis function

(RBF) and softmax. The tool is highly modular and new layer types can be added without

any change in the code base. Parameters of each layer type are modifiable, for example for the

convolutional layer, one can specify the size of the convolution kernels, the stride, the number of

kernels per input map and the learning parameters (learning rate, initial weights value...). For the

learning, the data dynamic can be chosen between 16 bits (with NVIDIA

®

cuDNN

2

), 32 bit and 64

bit floating point numbers.

The following example, which will serve as the use case for the rest of this presentation, shows

how to build a DNN with 5 layers: one convolution layer, followed by one MAX pooling layer,

followed by two fully connected layers and a softmax output layer.

; Specify the input data format

[env]

SizeX=24

SizeY=24

BatchSize=12

; First layer: convolutional with 3x3 kernels

[conv1]

Input=env

Type=Conv

2On future GPUs

7/82

KernelWidth=3

KernelHeight=3

NbOutputs=32

Stride=1

; Second layer: MAX pooling with pooling area 2x2

[pool1]

Input=conv1

Type=Pool

Pooling=Max

PoolWidth=2

PoolHeight=2

NbOutputs=32

Stride=2

Mapping.Size=1 ; one to one connection between convolution output maps and pooling input maps

; Third layer: fully connected layer with 60 neurons

[fc1]

Input=pool1

Type=Fc

NbOutputs=60

; Fourth layer: fully connected with 10 neurons

[fc2]

Input=fc1

Type=Fc

NbOutputs=10

; Final layer: softmax

[softmax]

Input=fc2

Type=Softmax

NbOutputs=10

WithLoss=1

[softmax.Target]

TargetValue=1.0

DefaultValue=0.0

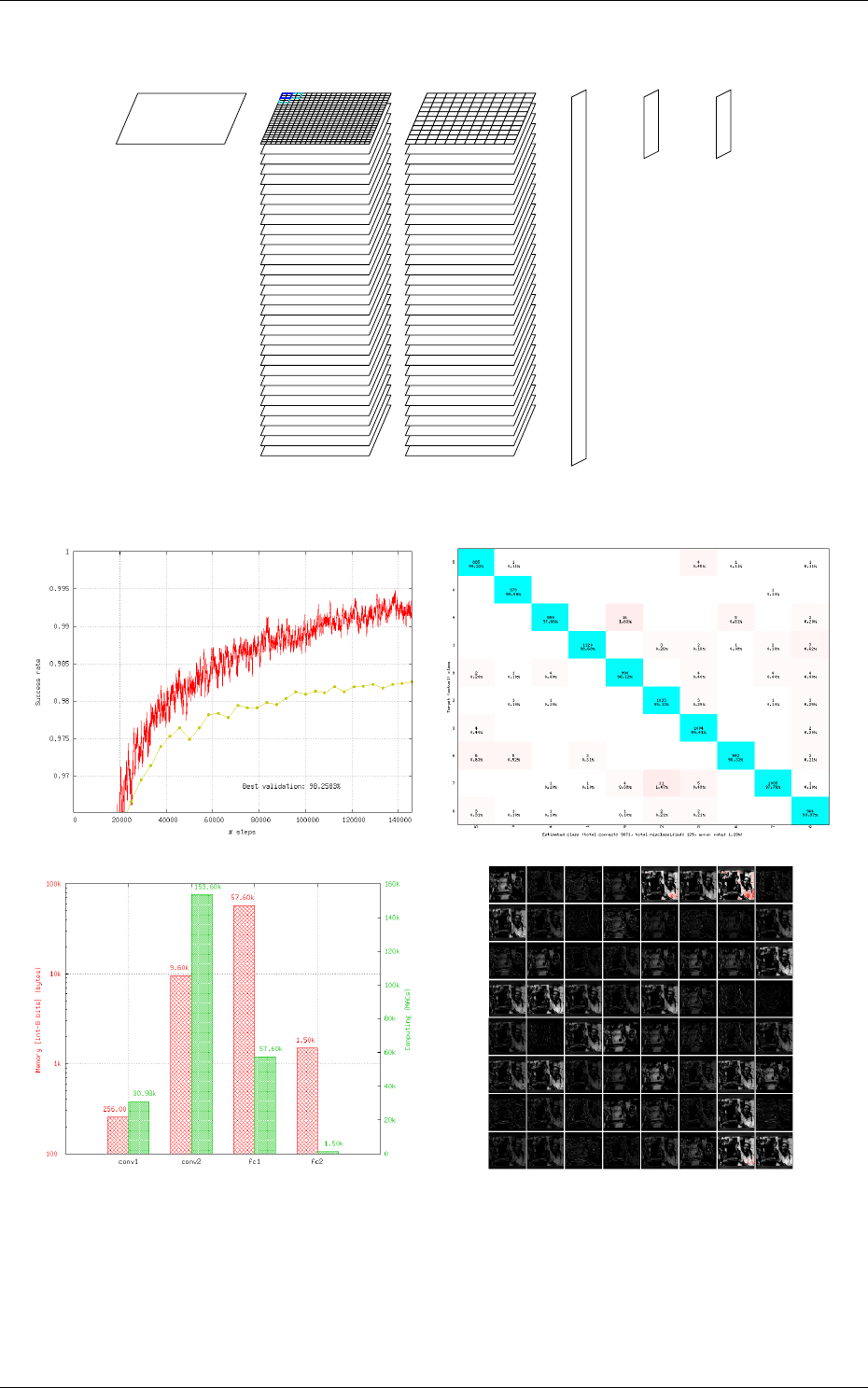

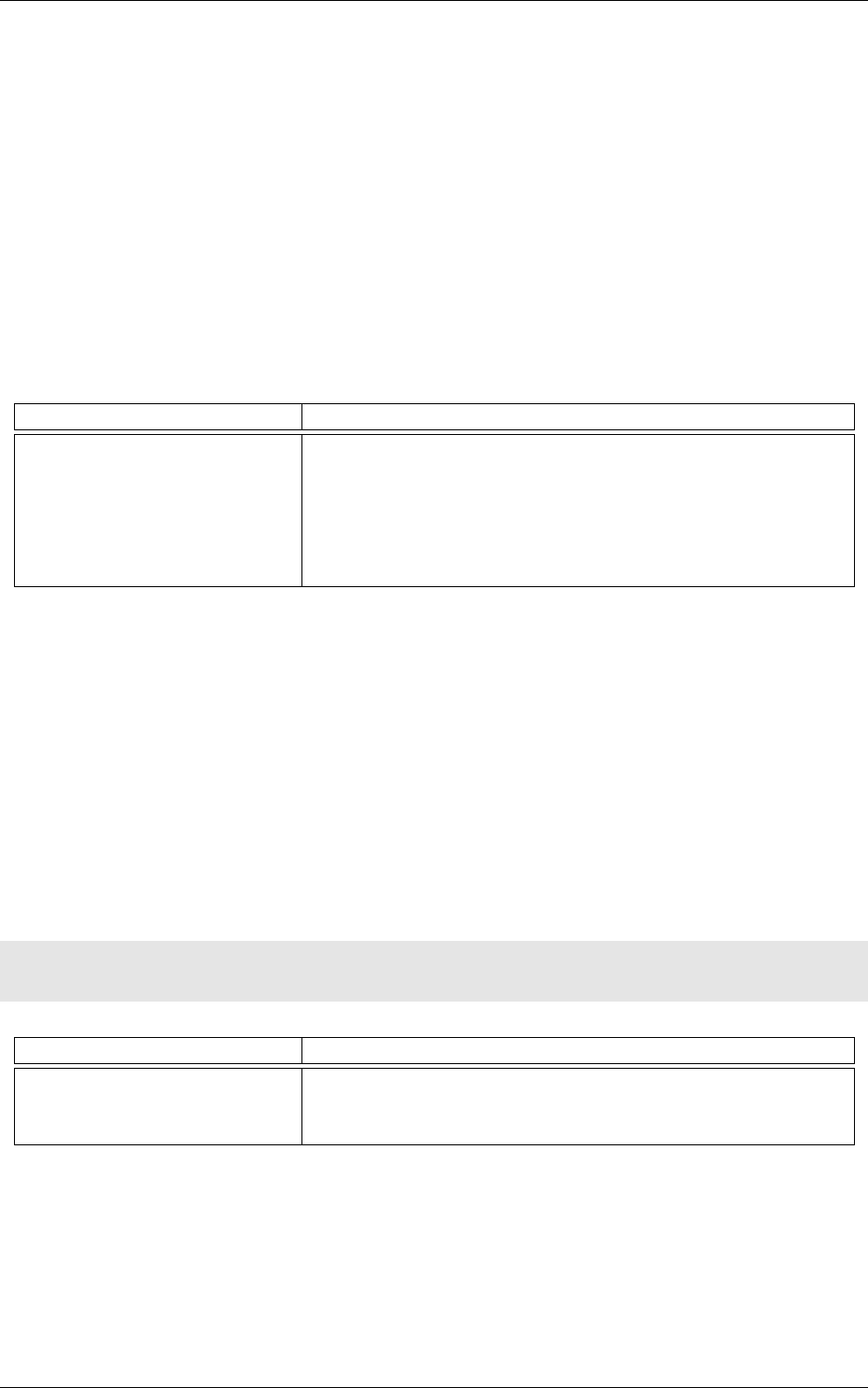

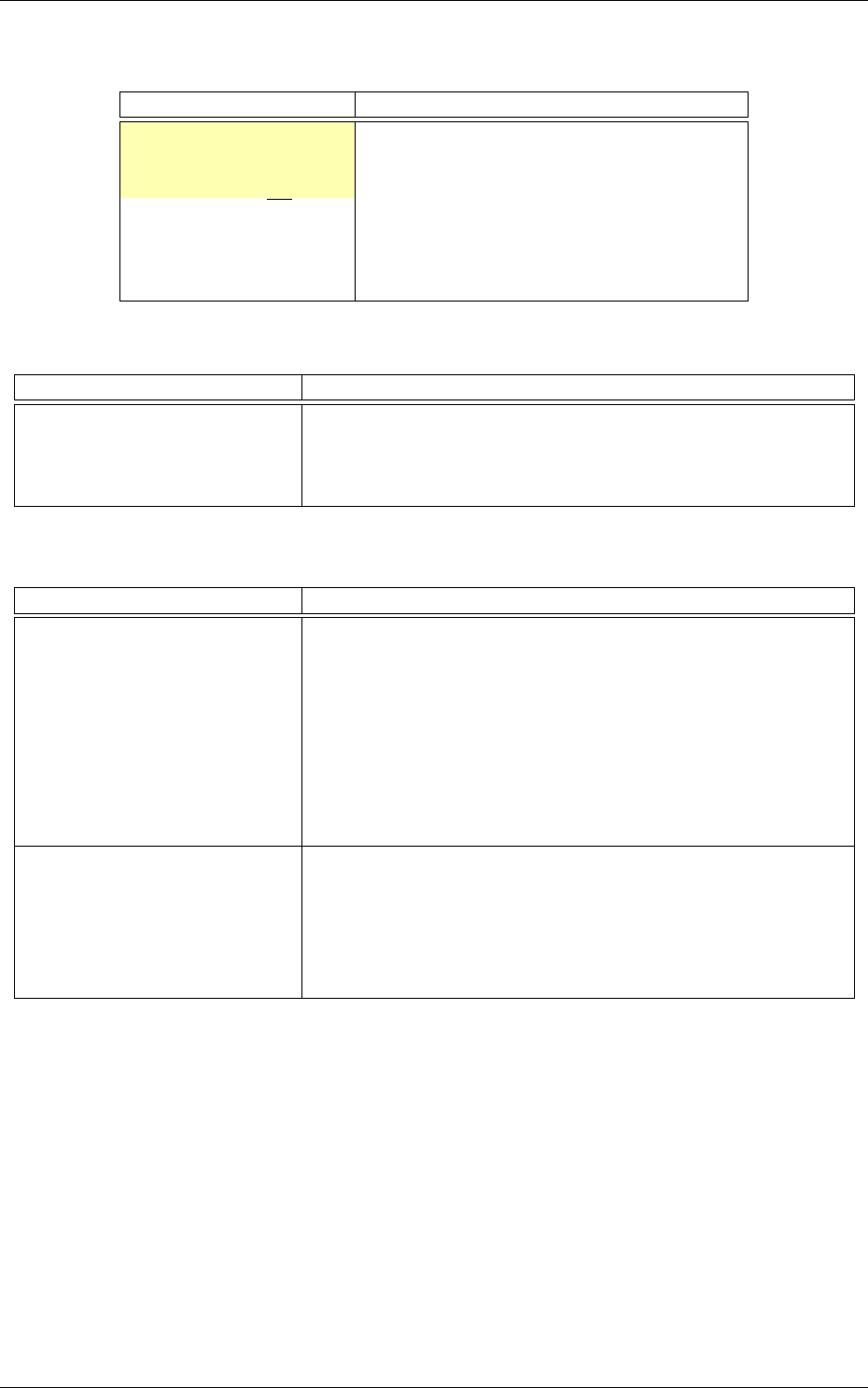

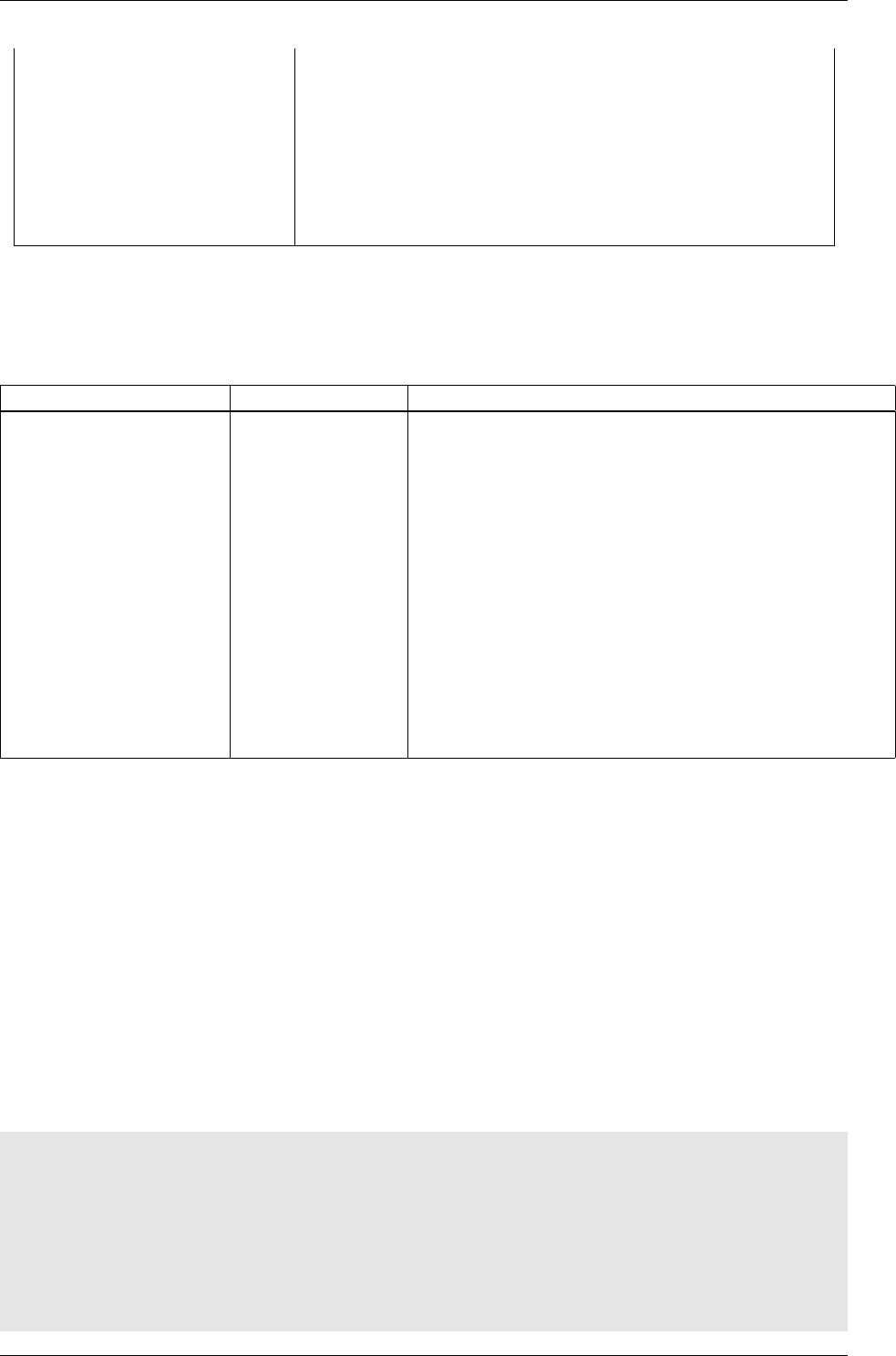

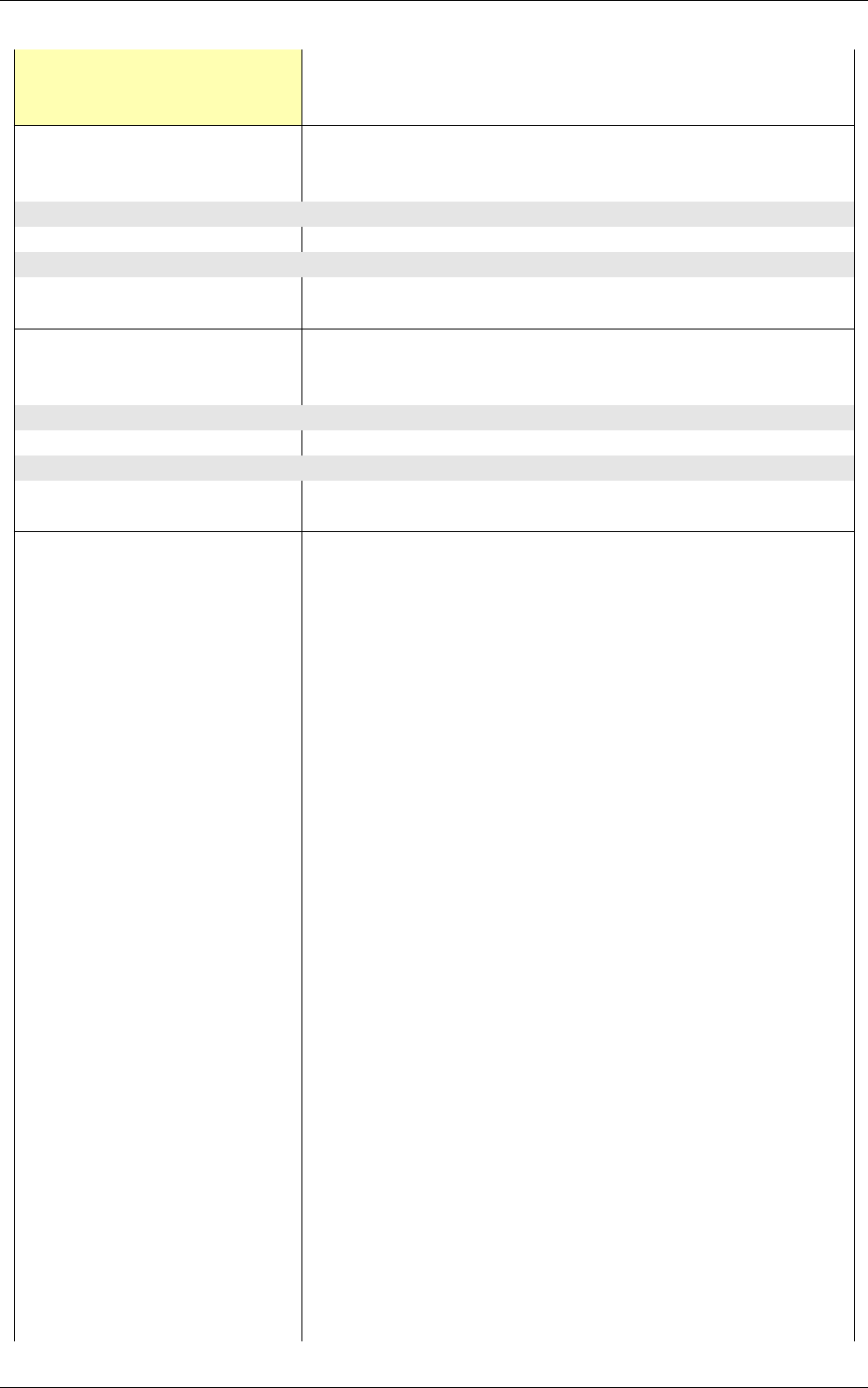

The resulting DNN is shown in figure 1.

The learning is accelerated in GPU using the NVIDIA

®

cuDNN framework, integrated into

the toolflow. Using GPU acceleration, learning times can be reduced typically by two orders of

magnitude, enabling the learning of large databases within tens of minutes to a few hours instead

of several days or weeks for non-GPU accelerated learning.

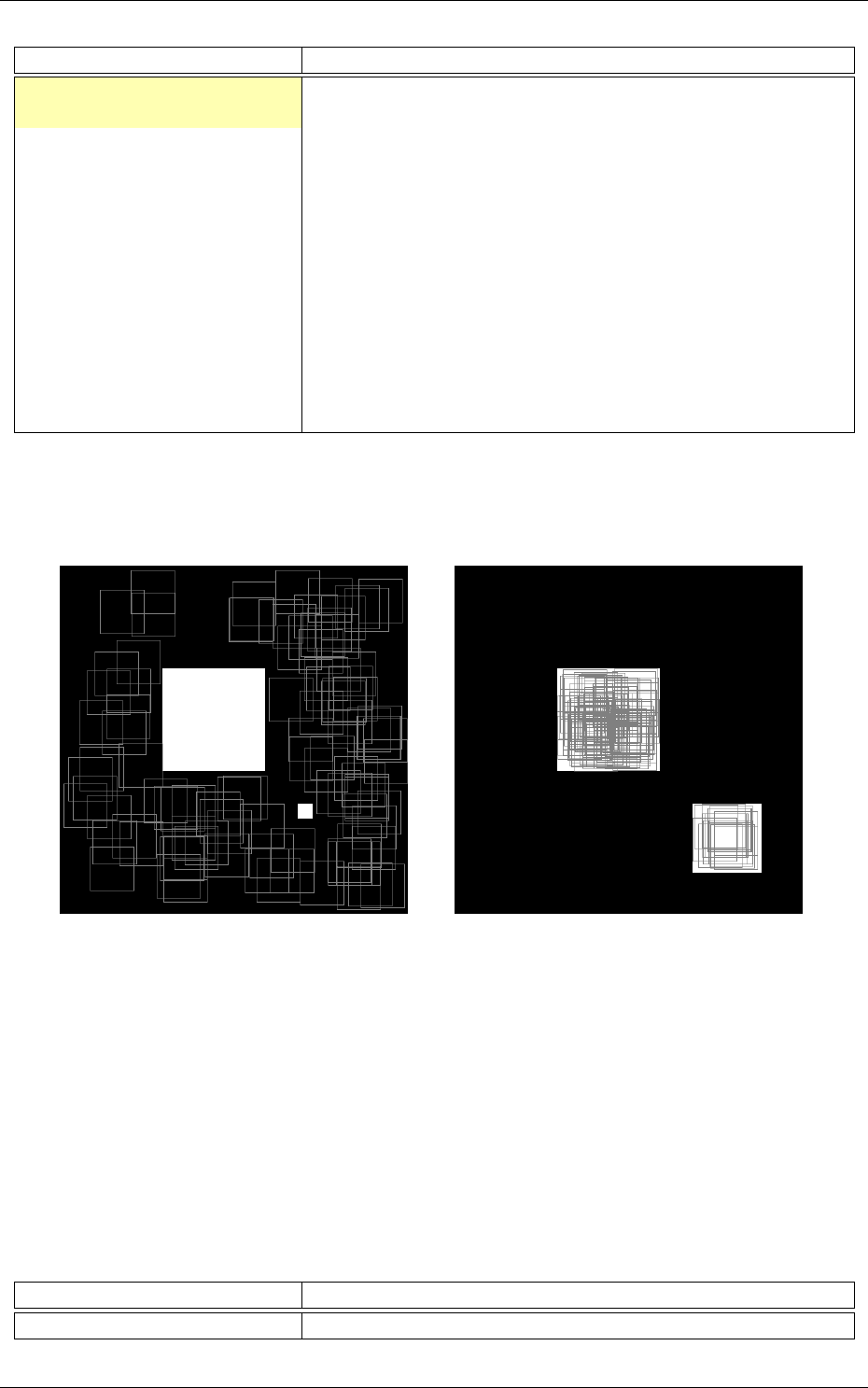

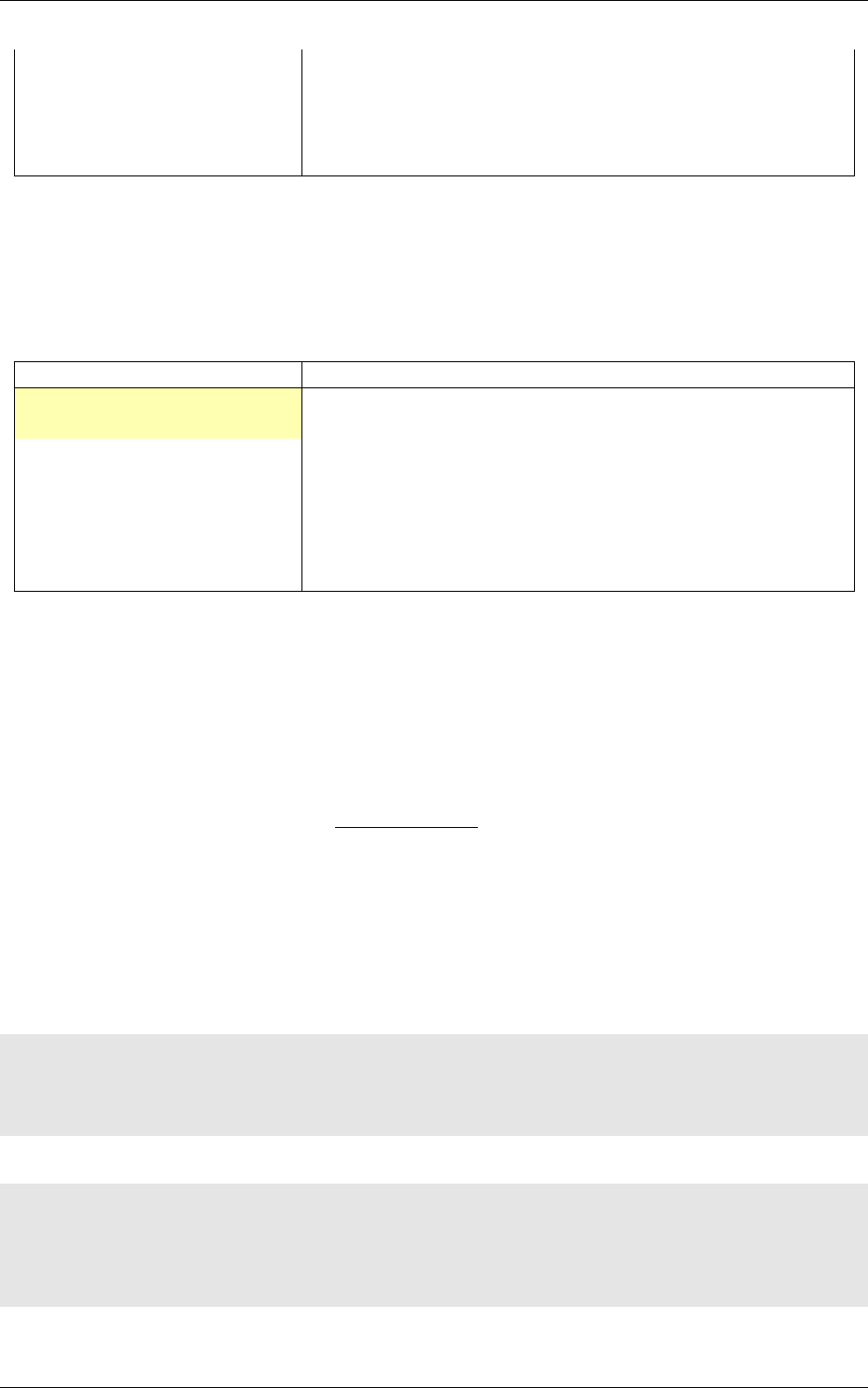

1.4 Performances evaluation

The software automatically outputs all the information needed for the network applicative per-

formances analysis, such as the recognition rate and the validation score during the learning; the

confusion matrix during learning, validation and test; the memory and computation requirements

of the network; the output maps activity for each layer, and so on, as shown in figure 2.

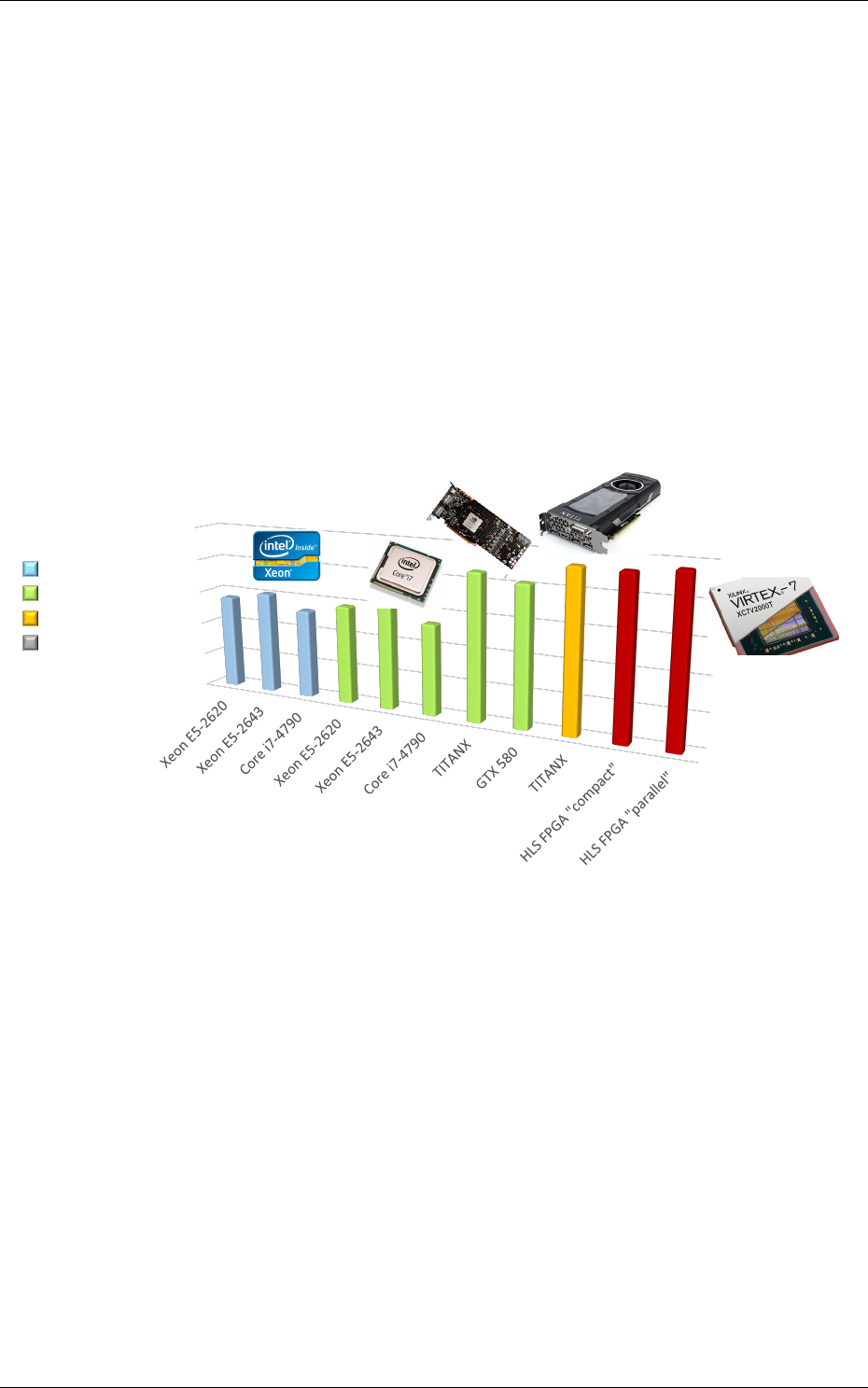

1.5 Hardware exports

Once the learned DNN recognition rate performances are satisfying, an optimized version of the

network can be automatically exported for various embedded targets. An automated network

computation performances benchmarking can also be performed among different targets.

The following targets are currently supported by the toolflow:

•Plain C code (no dynamic memory allocation, no floating point processing);

8/82

env

24x24

conv1

32 (22x22)

pool1

32 (11x11) Max

fc1

60

fc2

10

softmax

10

Figure 1: Automatically generated and ready to learn DNN from the INI configuration file example.

Recognition rate and validation score Confusion matrix

Memory and computation requirements Output maps activity

Figure 2: Example of information automatically generated by the software during and after learning.

•C code accelerated with OpenMP;

•C code tailored for High-Level Synthesis (HLS) with Xilinx®Vivado®HLS;

Direct synthesis to FPGA, with timing and utilization after routing;

9/82

Possibility to constrain the maximum number of clock cycles desired to compute the

whole network;

FPGA utilization vs number of clock cycle trade-off analysis;

•OpenCL code optimized for either CPU/DSP or GPU;

•Cuda kernels, cuDNN and TensorRT code optimized for NVIDIA®GPUs.

Different automated optimizations are embedded in the exports:

•

DNN weights and signal data precision reduction (down to 8 bit integers or less for custom

FPGA IPs);

•Non-linear network activation functions approximations;

•Different weights discretization methods.

The exports are generated automatically and come with a Makefile and a working testbench,

including the pre-processed testing dataset. Once generated, the testbench is ready to be compiled

and executed on the target platform. The applicative performance (recognition rate) as well as the

computing time per input data can then be directly mesured by the testbench.

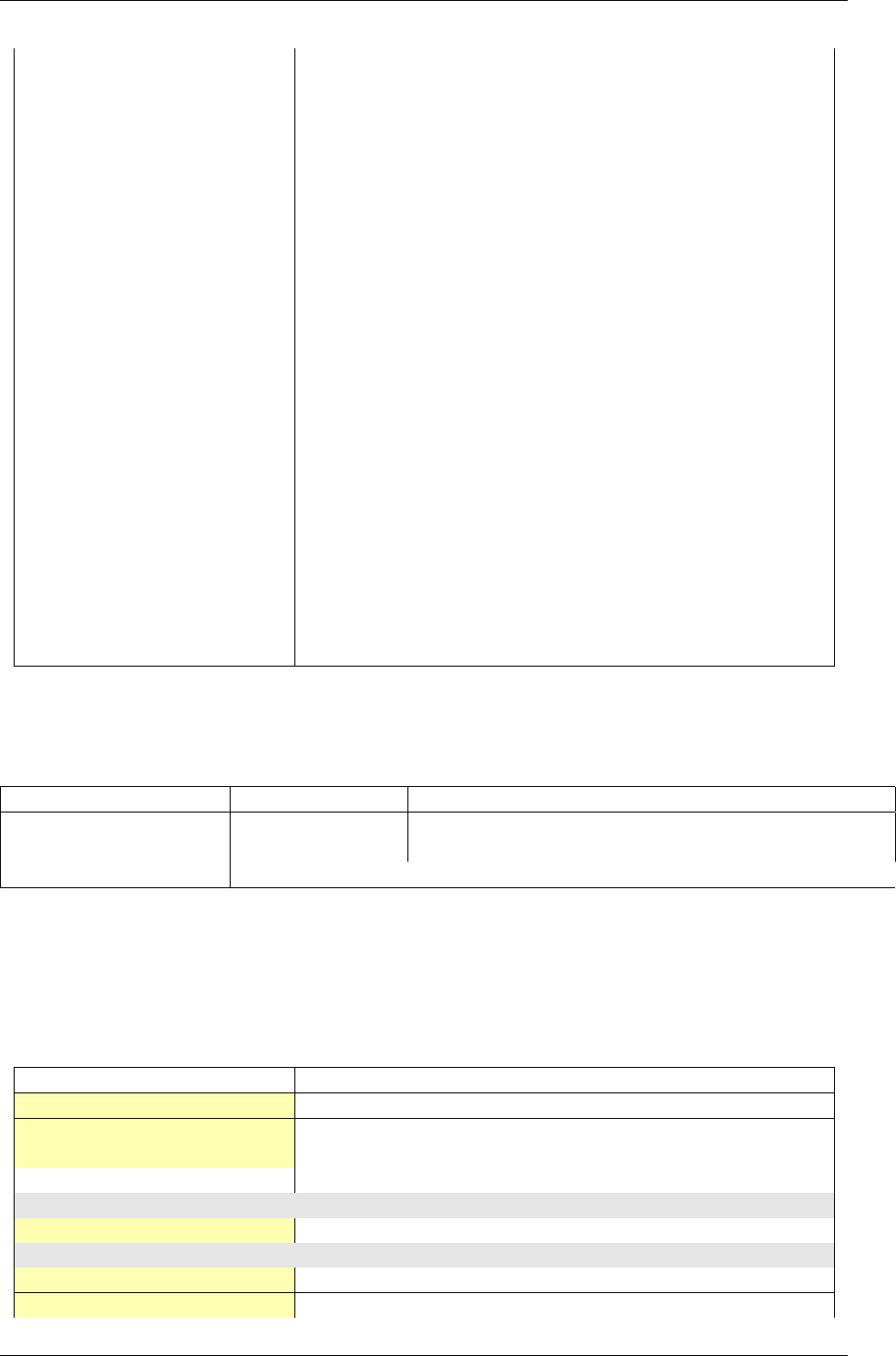

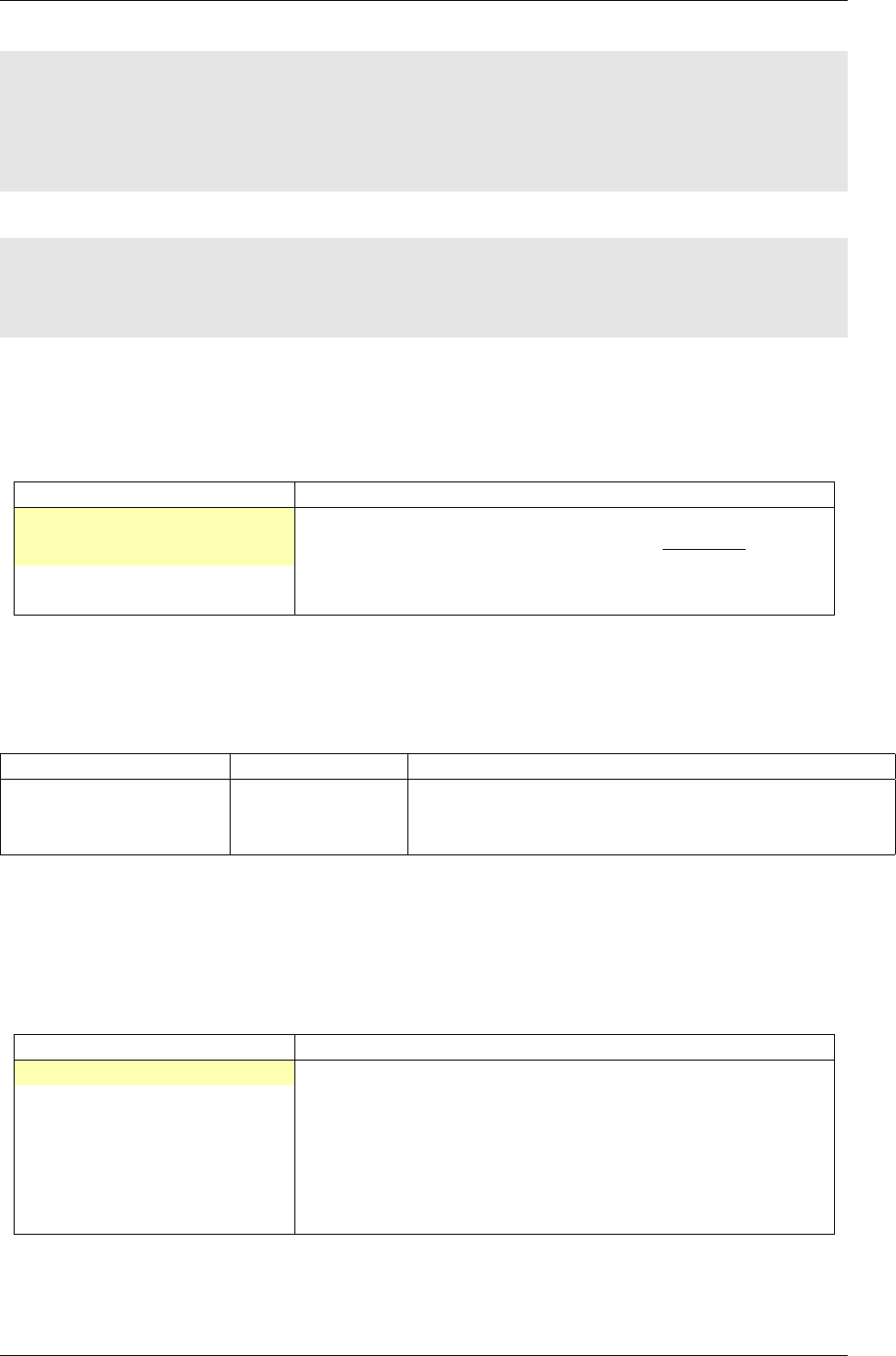

OpenMP

OpenCL

CUDA

HLS FPGA

1

10

100

1000

10000

100000

Kpixels image / s

Figure 3: Example of network benchmarking on different hardware targets.

The figure 3 shows an example of benchmarking results of the previous DNN on different targets

(in log scale). Compared to desktop CPUs, the number of input image pixels processed per second

is more than one order of magnitude higher with GPUsand at least two orders of magnitude better

with synthesized DNN on FPGA.

1.6 Summary

The N2D2 platform is today a complete and production ready neural network building tool, which

does not require advanced knownledges in deep learning to be used. It is tailored for fast neural

network applications generation and porting with minimum overhead in terms of database creation

and management, data pre-processing, networks configuration and optimized code generation,

which can save months of manual porting and verification effort to a single automated step in the

tool.

10/82

2 About N2D2-IP

While N2D2 is our deep learning open-source core framework, some modules referred as "N2D2-IP"

in the manual, are only available through custom license agreement with CEA LIST.

If you are interested in obtaining some of these modules, please contact our business developer

for more information on available licensing options:

Sandrine VARENNE (Sandrine.VARENNE@cea.fr)

In addition to N2D2-IP modules, we can also provide our expertise to design specific solutions

for integrating DNN in embedded hardware systems, where power, latency, form factor and/or

cost are constrained. We can target CPU/DSP/GPU CoTS hardware as well as our own PNeuro

(programmable) and DNeuro (dataflow) dedicated hardware accelerator IPs for DNN on FPGA or

ASIC.

3 Performing simulations

3.1 Obtaining the latest version of this manual

Before going further, please make sure you are reading the latest version of this manual. It is located

in the manual sub-directory. To compile the manual in PDF, just run the following command:

cd manual && make

In order to compile the manual, you must have

pdflatex

and

bibtex

installed, as well as some

common LaTeX packages.

•

On Ubuntu, this can be done by installing the

texlive

and

texlive-latex-extra

software

packages.

•

On Windows, you can install the

MiKTeX

software, which includes everything needed and will

install the required LaTeX packages on the fly.

3.2 Minimum system requirements

•Supported processors:

ARM Cortex A15 (tested on Tegra K1)

ARM Cortex A53/A57 (tested on Tegra X1)

Pentium-compatible PC (Pentium III, Athlon or more-recent system recommended)

•Supported operating systems:

Windows

≥

7 or Windows Server

≥

2012, 64 bits with Visual Studio

≥

2015.2 (2015

Update 2)

GNU/Linux with GCC ≥4.4 (tested on RHEL ≥6, Debian ≥6, Ubuntu ≥14.04)

•At least 256 MB of RAM (1 GB with GPU/CUDA) for MNIST dataset processing

•At least 150 MB available hard disk space + 350 MB for MNIST dataset processing

For CUDA acceleration:

•CUDA ≥6.5 and CuDNN ≥1.0

•

NVIDIA GPU with CUDA compute capability

≥

3 (starting from Kepler micro-architecture)

•At least 512 MB GPU RAM for MNIST dataset processing

11/82

3.3 Obtaining N2D2

3.3.1 Prerequisites

Red Hat Enterprise Linux (RHEL) 6 Make sure you have the following packages installed:

•cmake

•gnuplot

•opencv

•opencv-devel (may require the rhel-x86_64-workstation-optional-6 repository channel)

Plus, to be able to use GPU acceleration:

•Install the CUDA repository package:

rpm -Uhv http://developer.download.nvidia.com/compute/cuda/repos/rhel6/x86_64/cuda-repo-

rhel6-7.5-18.x86_64.rpm

yum clean expire-cache

yum install cuda

•

Install cuDNN from the NVIDIA website: register to NVIDIA Developer and download the lat-

est version of cuDNN. Simply copy the header and library files from the cuDNN archive to the

corresponding directories in the CUDA installation path (by default:

/usr/local/cuda/include

and /usr/local/cuda/lib64, respectively).

•

Make sure the CUDA library path (e.g.

/usr/local/cuda/lib64

) is added to the

LD_LIBRARY_PATH

environment variable.

Ubuntu

Make sure you have the following packages installed, if they are available on your Ubuntu

version:

•cmake

•gnuplot

•libopencv-dev

•libcv-dev

•libhighgui-dev

Plus, to be able to use GPU acceleration:

•

Install the CUDA repository package matching your distribution. For example, for Ubuntu

14.04 64 bits:

wget http://developer.download.nvidia.com/compute/cuda/repos/ubuntu1404/x86_64/cuda-repo-

ubuntu1404_7.5-18_amd64.deb

dpkg -i cuda-repo-ubuntu1404_7.5-18_amd64.deb

•

Install the cuDNN repository package matching your distribution. For example, for Ubuntu

14.04 64 bits:

wget http://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1404/x86_64/

nvidia-machine-learning-repo-ubuntu1404_4.0-2_amd64.deb

dpkg -i nvidia-machine-learning-repo-ubuntu1404_4.0-2_amd64.deb

Note that the cuDNN repository package is provided by NVIDIA for Ubuntu starting from

version 14.04.

•Update the package lists: apt-get update

•Install the CUDA and cuDNN required packages:

apt-get install cuda-core-7-5 cuda-cudart-dev-7-5 cuda-cublas-dev-7-5 cuda-curand-dev-7-5

libcudnn5-dev

•Make sure there is a symlink to /usr/local/cuda:

ln -s /usr/local/cuda-7.5 /usr/local/cuda

•

Make sure the CUDA library path (e.g.

/usr/local/cuda/lib64

) is added to the

LD_LIBRARY_PATH

environment variable.

12/82

Windows On Windows 64 bits, Visual Studio ≥2015.2 (2015 Update 2) is required.

Make sure you have the following software installed:

•CMake (http://www.cmake.org/): download and run the Windows installer.

•dirent.h

C++ header (

https://github.com/tronkko/dirent

): to be put in the Visual

Studio include path.

•

Gnuplot (

http://www.gnuplot.info/

): the bin sub-directory in the install path needs to be

added to the Windows PATH environment variable.

•

OpenCV (

http://opencv.org/

): download the latest 2.x version for Windows and extract it

to, for example,

C:\OpenCV\

. Make sure to define the environment variable

OpenCV_DIR

to point

to

C:\OpenCV\opencv\build

. Make sure to add the bin sub-directory (

C:\OpenCV\opencv\build\x64

\vc12\bin) to the Windows PATH environment variable.

Plus, to be able to use GPU acceleration:

•

Download and install CUDA toolkit 8.0 located at

https://developer.nvidia.com/compute/

cuda/8.0/prod/local_installers/cuda_8.0.44_windows-exe:

rename cuda_8.0.44_windows-exe cuda_8.0.44_windows.exe

cuda_8.0.44_windows.exe -s compiler_8.0 cublas_8.0 cublas_dev_8.0 cudart_8.0 curand_8.0

curand_dev_8.0

•Update the PATH environment variable:

set PATH=%ProgramFiles%\NVIDIA GPU Computing Toolkit\CUDA\v8.0\bin;%ProgramFiles%\NVIDIA GPU

Computing Toolkit\CUDA\v8.0\libnvvp;%PATH%

•

Download and install cuDNN 8.0 located at

http://developer.download.nvidia.com/

compute/redist/cudnn/v5.1/cudnn-8.0-windows7-x64-v5.1.zip

(the following command

assumes that you have 7-Zip installed):

7z x cudnn-8.0-windows7-x64-v5.1.zip

copy cuda\include\*.* ^

"%ProgramFiles%\NVIDIA GPU Computing Toolkit\CUDA\v8.0\include\"

copy cuda\lib\x64\*.* ^

"%ProgramFiles%\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64\"

copy cuda\bin\*.* ^

"%ProgramFiles%\NVIDIA GPU Computing Toolkit\CUDA\v8.0\bin\"

3.3.2 Getting the sources

Use the following command:

git clone git@github.com:CEA-LIST/N2D2.git

3.3.3 Compilation

To compile the program:

mkdir build

cd build

cmake .. && make

On Windows, you may have to specify the generator, for example:

cmake .. -G"Visual Studio 14"

Then open the newly created N2D2 project in Visual Studio 2015. Select "Release" for the build

target. Right click on ALL_BUILD item and select "Build".

13/82

3.4 Downloading training datasets

A python script located in the repository root directory allows you to select and automatically

download some well-known datasets, like MNIST and GTSRB (the script requires Python 2.x with

bindings for GTK 2 package):

./tools/install_stimuli_gui.py

By default, the datasets are downloaded in the path specified in the

N2D2_DATA

environment

variable, which is the root path used by the N2D2 tool to locate the databases. If the

N2D2_DATA

variable is not set, the default value used is

/local/$USER/n2d2_data/

(or

/local/n2d2_data/

if

the USER environment variable is not set) on Linux and C:\n2d2_data\ on Windows.

Please make sure you have write access to the

N2D2_DATA

path, or if not set, in the default

/local/$USER/n2d2_data/ path.

3.5 Run the learning

The following command will run the learning for 600,000 image presentations/steps and log the

performances of the network every 10,000 steps:

./n2d2 "mnist24_16c4s2_24c5s2_150_10.ini" -learn 600000 -log 10000

Note: you may want to check the gradient computation using the

-check

option. Note that it

can be extremely long and can occasionally fail if the required precision is too high.

3.6 Test a learned network

After the learning is completed, this command evaluate the network performances on the test data

set:

./n2d2 "mnist24_16c4s2_24c5s2_150_10.ini" -test

3.6.1 Interpreting the results

Recognition rate

The recognition rate and the validation score are reported during the learning

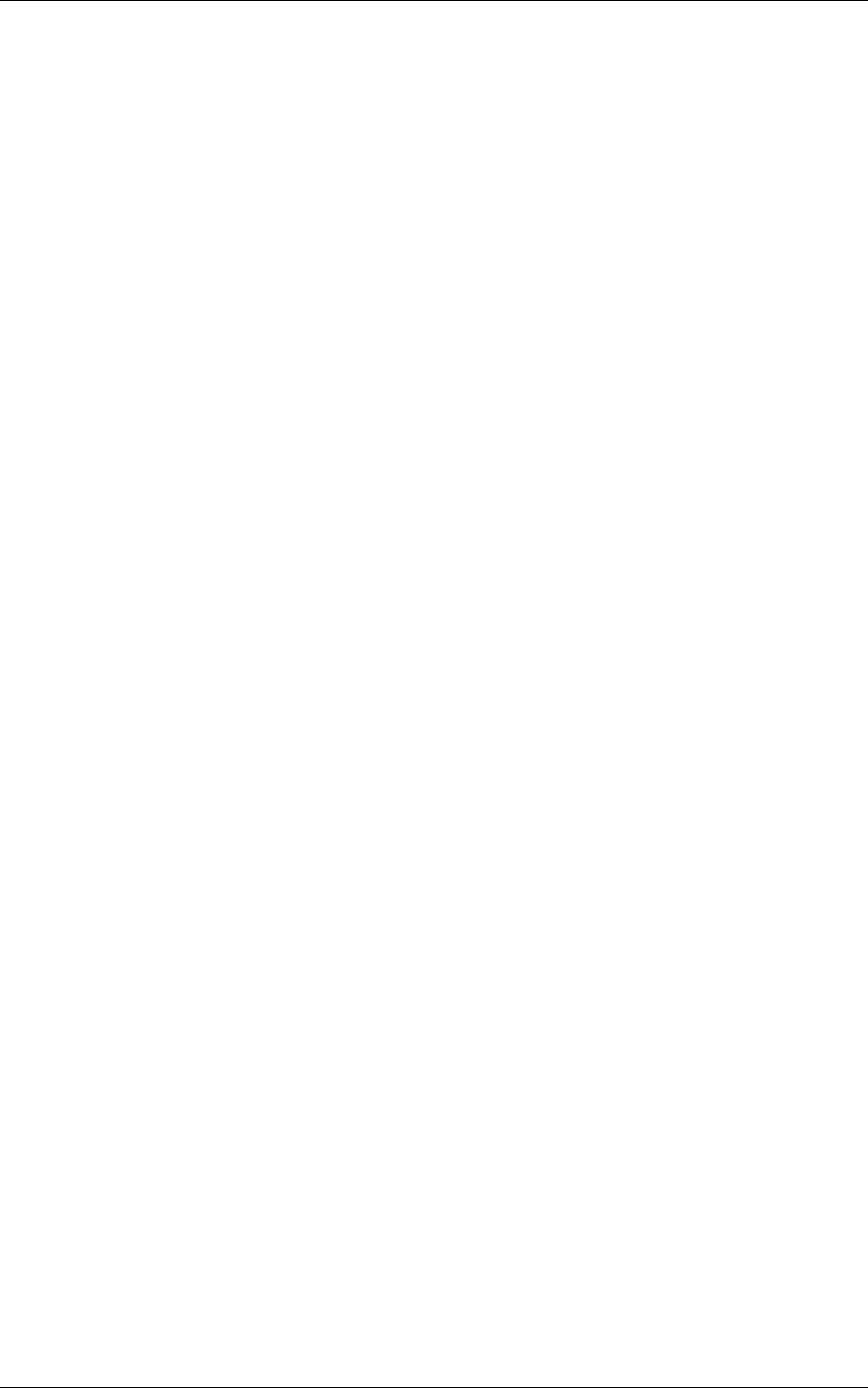

in the TargetScore_*/Success_validation.png file, as shown in figure 4.

Confusion matrix

The software automatically outputs the confusion matrix during learning,

validation and test, with an example shown in figure 5. Each row of the matrix contains the number

of occurrences estimated by the network for each label, for all the data corresponding to a single

actual, target label. Or equivalently, each column of the matrix contains the number of actual,

target label occurrences, corresponding to the same estimated label. Idealy, the matrix should be

diagonal, with no occurrence of an estimated label for a different actual label (network mistake).

The confusion matrix reports can be found in the simulation directory:

•TargetScore_*/ConfusionMatrix_learning.png;

•TargetScore_*/ConfusionMatrix_validation.png;

•TargetScore_*/ConfusionMatrix_test.png.

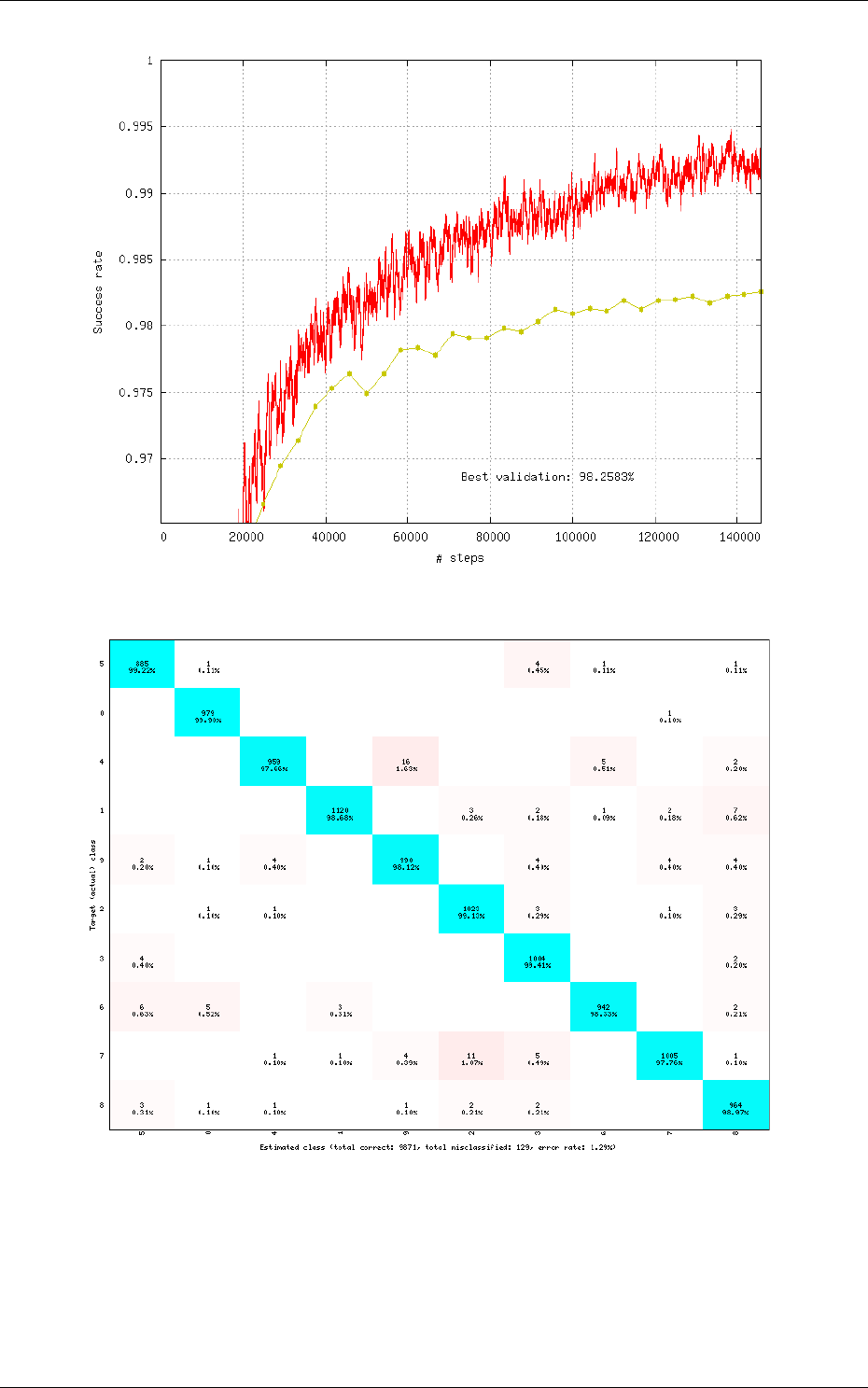

Memory and computation requirements

The software also report the memory and compu-

tation requirements of the network, as shown in figure 6. The corresponding report can be found in

the stats sub-directory of the simulation.

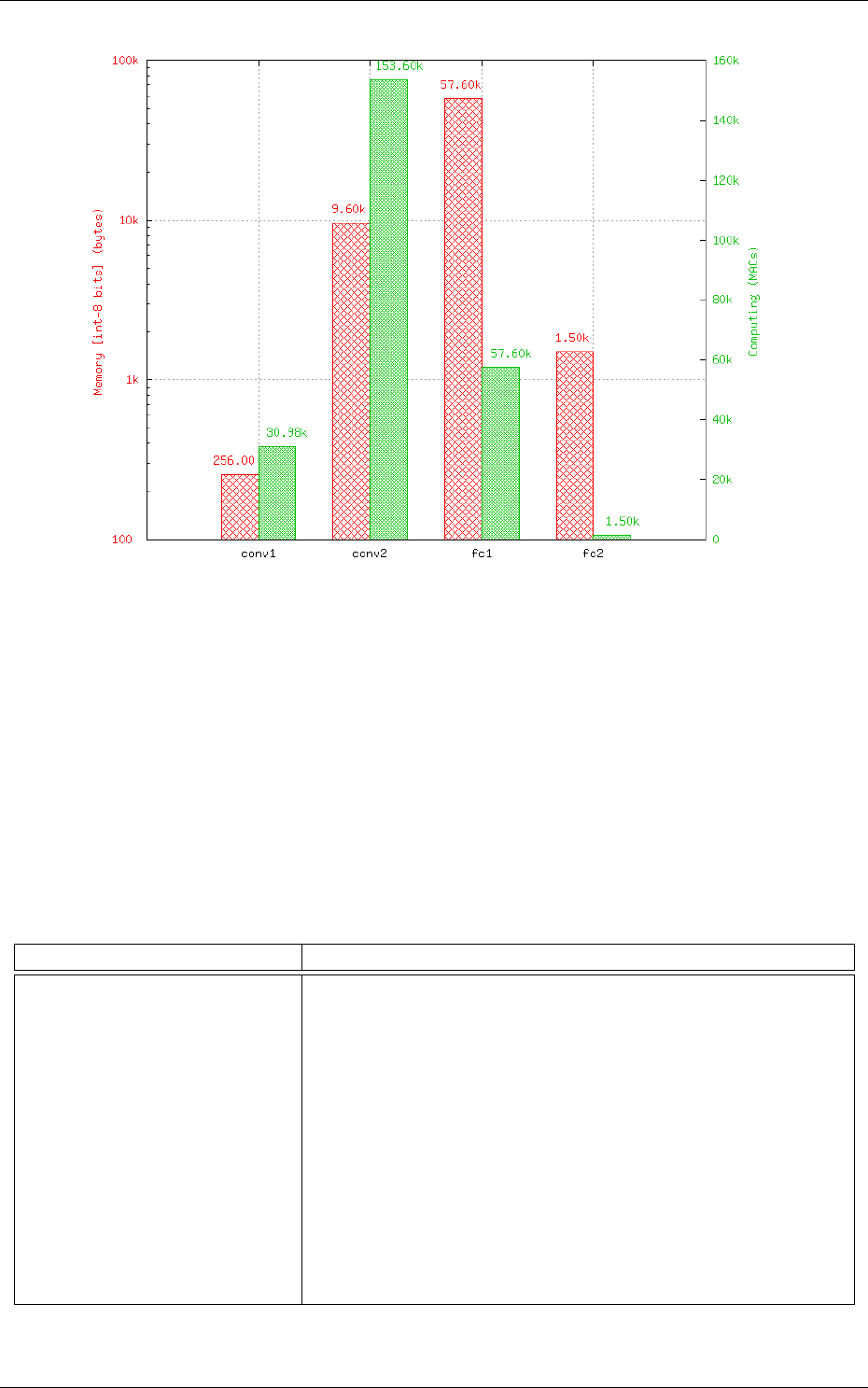

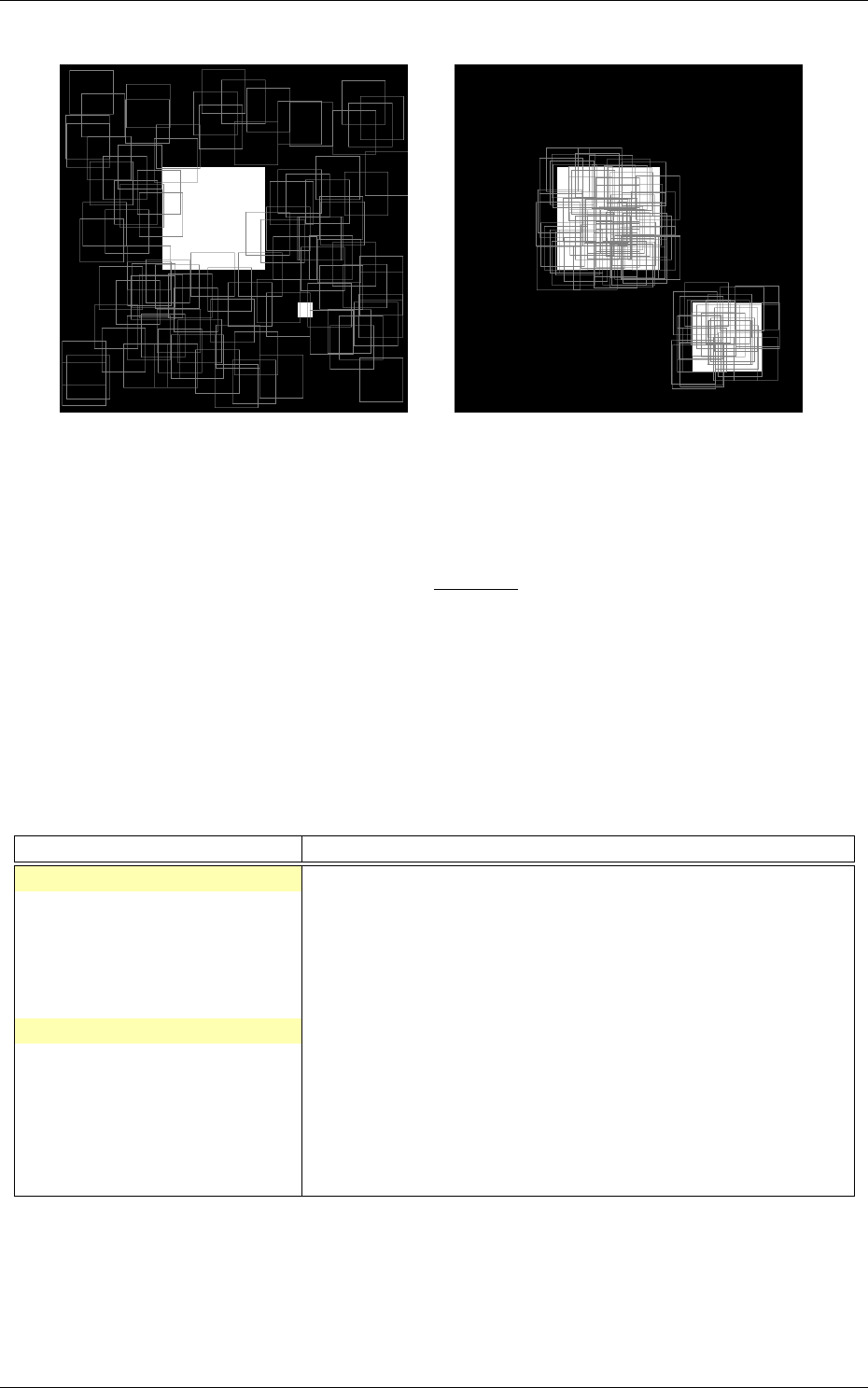

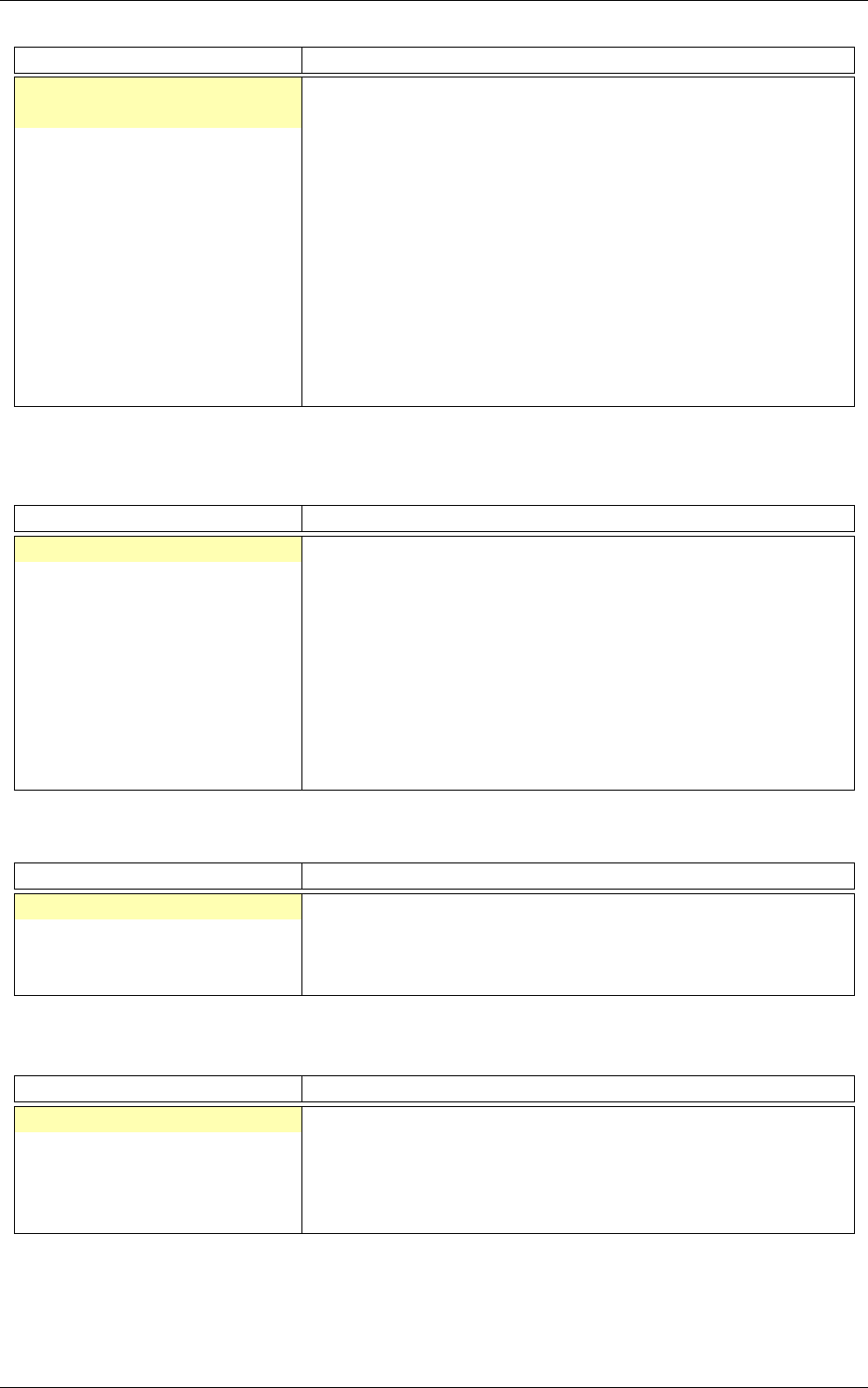

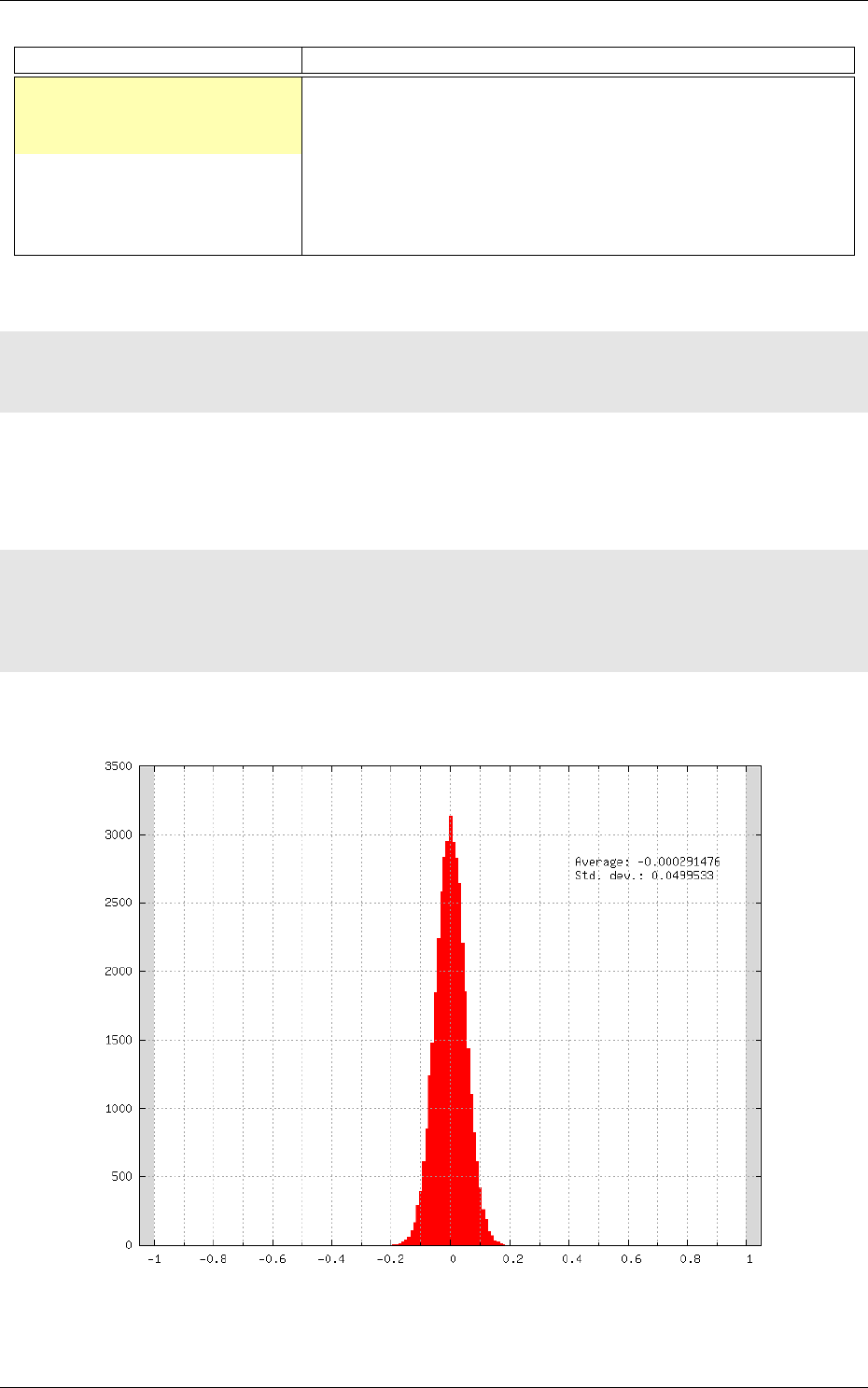

Kernels and weights distribution

The synaptic weights obtained during and after the learning

can be analyzed, in terms of distribution (weights sub-directory of the simulation) or in terms of

kernels (kernels sub-directory of the simulation), as shown in 7.

14/82

Figure 4: Recognition rate and validation score during learning.

Figure 5: Example of confusion matrix obtained after the learning.

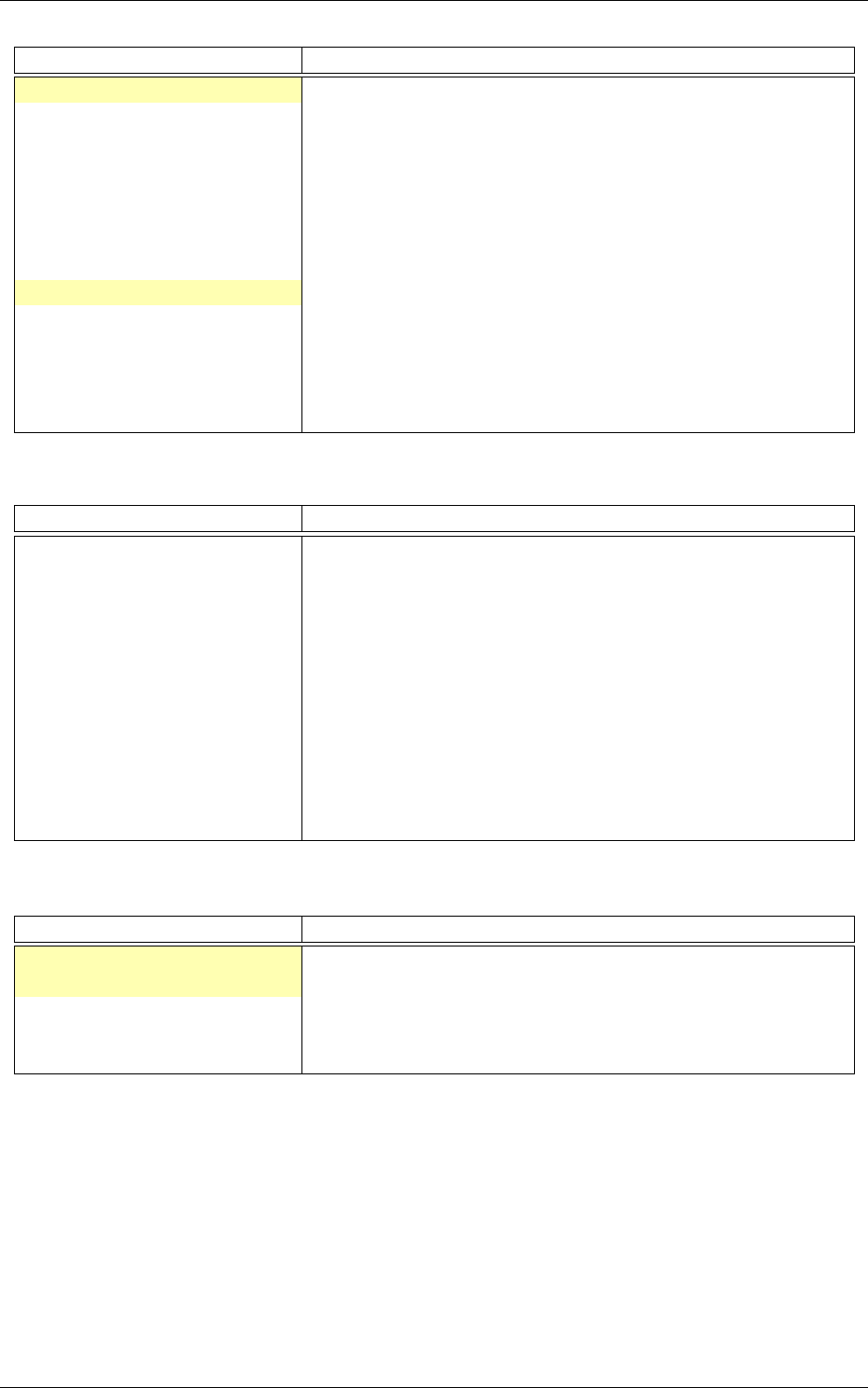

Output maps activity

The initial output maps activity for each layer can be visualized in the

outputs_init sub-directory of the simulation, as shown in figure 8.

3.7 Export a learned network

15/82

Figure 6: Example of memory and computation requirements of the network.

./n2d2 "mnist24_16c4s2_24c5s2_150_10.ini" -export CPP_OpenCL

Export types:

•CC export using OpenMP;

•C_HLS C export tailored for HLS with Vivado HLS;

•CPP_OpenCL C++ export using OpenCL;

•CPP_Cuda C++ export using Cuda;

•CPP_cuDNN C++ export using cuDNN;

•CPP_TensorRT C++ export using tensorRT 2.1 API;

•SC_Spike SystemC spike export.

Other program options related to the exports:

Option [default value] Description

-nbbits [8]

Number of bits for the weights and signals. Must be 8, 16, 32

or 64 for integer export, or -32, -64 for floating point export.

The number of bits can be arbitrary for the

C_HLS

export (for

example, 6 bits)

-calib [0]

Number of stimuli used for the calibration. 0 = no calibration

(default), -1 = use the full test dataset for calibration

-calib-passes [2]

Number of KL passes for determining the layer output values

distribution truncation threshold (0 = use the max. value,

no truncation)

-no-unsigned

If present, disable the use of unsigned data type in integer

exports

-db-export [-1]

Max. number of stimuli to export (0 = no dataset export, -1

= unlimited)

16/82

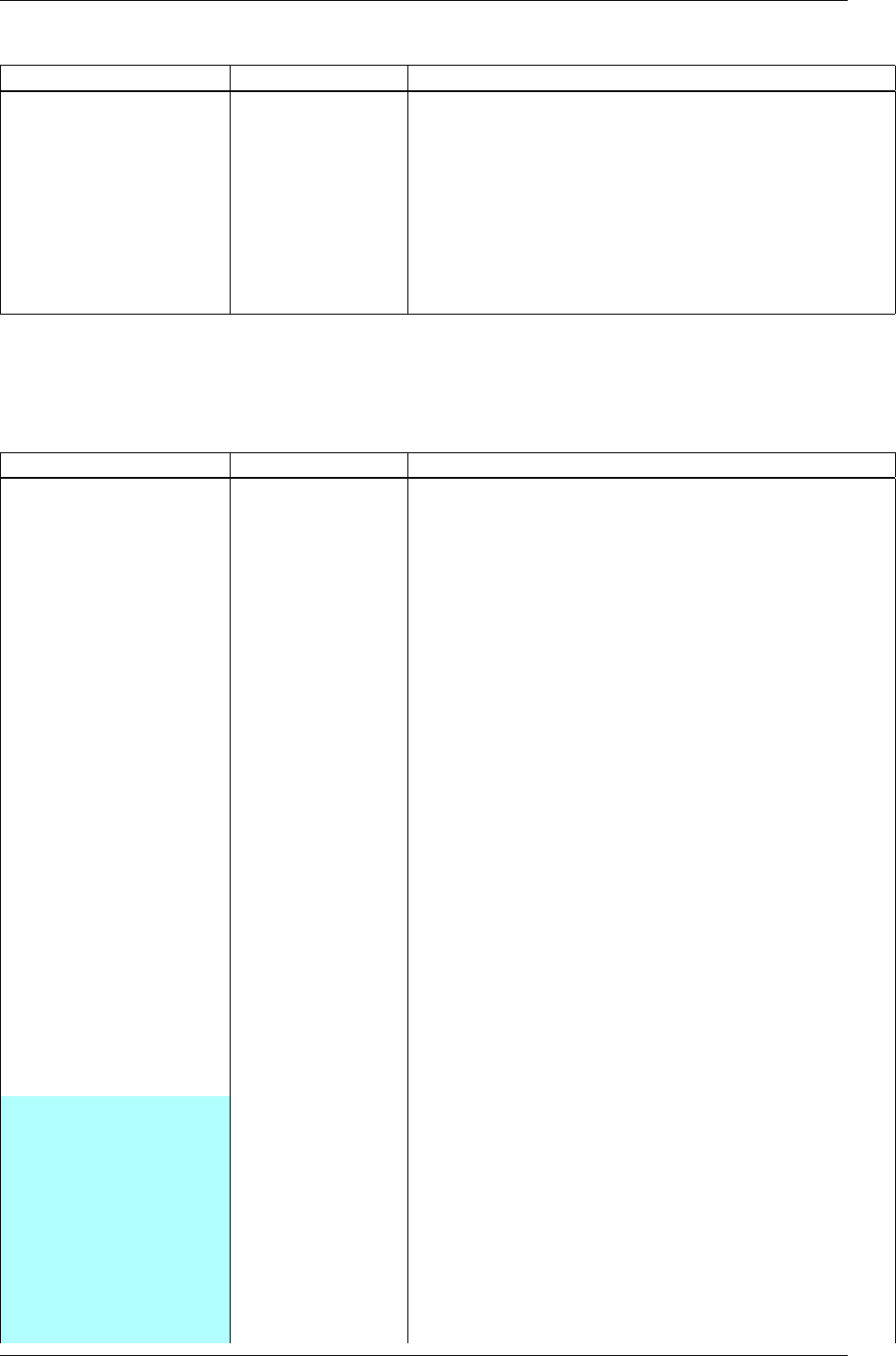

conv1 kernels conv2 kernels

conv1 weights distribution conv2 weights distribution

Figure 7: Example of kernels and weights distribution analysis for two convolutional layers.

3.7.1 CexportN2D2 IP only

Test the exported network:

cd export_C_int8

make

./bin/n2d2_test

The result should look like:

...

16 52 .0 0/ 17 62 ( avg = 93.757094%)

16 53 .0 0/ 17 63 ( avg = 93.760635%)

16 54 .0 0/ 17 64 ( avg = 93.764172%)

Te st ed 1764 s t i m u l i

S u c c e s s r a t e = 93.7 64172%

P ro ce ss t ime pe r s t i m u l u s = 1 87. 548 18 6 us (1 2 t h r e a d s )

Co nf us io n m a tri x :

−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−

| T \ E | 0 | 1 | 2 | 3 |

−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−

17/82

Figure 8: Output maps activity example of the first convolutional layer of the network.

| 0 | 329 | 1 | 5 | 2 |

| | 97.63% | 0.30% | 1.48% | 0.59% |

| 1 | 0 | 692 | 2 | 6 |

| | 0.00% | 98.86% | 0.29% | 0.86% |

| 2 | 11 | 27 | 609 | 55 |

| | 1.57% | 3.85% | 86.75% | 7.83% |

| 3 | 0 | 0 | 1 | 24 |

| | 0.00% | 0.00% | 4.00% | 96.00% |

−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−−

T: T ar ge t E: Es t im a te d

3.7.2 CPP_OpenCL exportN2D2 IP only

The OpenCL export can run the generated program in GPU or CPU architectures. Compilation

features:

18/82

Preprocessor command [default value] Description

PROFILING [0]

Compile the binary with a synchronization be-

tween each layers and return the mean execution

time of each layer. This preprocessor option can

decrease performances.

GENERATE_KBIN [0]

Generate the binary output of the OpenCL kernel

.cl file use. The binary is store in the /bin folder.

LOAD_KBIN [0]

Indicate to the program to load an OpenCL ker-

nel as a binary from the /bin folder instead of a

.cl file.

CUDA [0]

Use the CUDA OpenCL SDK locate at

/usr/local/cuda

MALI [0]

Use the MALI OpenCL SDK locate at

/usr/MaliOpenCLSDKvXXX

INTEL [0]

Use the INTEL OpenCL SDK locate at

/opt/intel/opencl

AMD [1]

Use the AMD OpenCL SDK locate at

/opt/AM DAP P SDK −XXX

Program options related to the OpenCL export:

Option [default value] Description

-cpu

If present, force to use a CPU architecture to run the program

-gpu

If present, force to use a GPU architecture to run the program

-batch [1] Size of the batch to use

-stimulus [NULL]

Path to a specific input stimulus to test. For example: -

stimulus

/stimulus/env0000.pgm

command will test the file

env0000.pgm of the stimulus folder.

Test the exported network:

cd export_CPP_OpenCL_float32

make

./bin/n2d2_opencl_test -gpu

3.7.3 CPP_TensorRT export

The tensorRT 2.1 API export can run the generated program in NVIDIA GPU architecture. It use

CUDA and tensorRT 2.1 API library. The currently supported layers by the tensorRT 2.1 export

are : Convolutional, Pooling, Concatenation, Fully-Connected, Softmax and all activations type.

Custom layers implementation through the plugin factory and generic 8-bits calibrations inference

features are under development.

Program options related to the tensorRT 2.1 API export:

Option [default value] Description

-batch [1] Size of the batch to use

-dev [0] CUDA Device ID selection

-stimulus [NULL]

Path to a specific input stimulus to test. For example: -

stimulus

/stimulus/env0000.pgm

command will test the file

env0000.pgm of the stimulus folder.

-prof

Activates the layer wise profiling mechanism. This option

can decrease execution time performance.

-iter-build [1]

Sets the number of minimization build iterations done by

the tensorRT builder to find the best layer tactics.

19/82

Test the exported network with layer wise profiling:

cd export_CPP_TensorRT_float32

make

./bin/n2d2_tensorRT_test -prof

The results of the layer wise profiling should look like:

(19%) ∗∗∗∗ ∗∗∗∗∗ ∗∗∗∗∗ ∗∗∗∗∗ ∗∗∗∗∗∗∗∗∗∗∗∗∗∗ ∗∗∗∗∗ ∗∗ CONV1 + CONV1_ACTIVATION:

0. 02 194 67 ms

(05%) ∗∗∗∗∗∗∗∗∗∗∗∗ POOL1: 0 .0 06 75 57 3 ms

(13%) ∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗ CONV2 + CONV2_ACTIVATION: 0. 015 90 89 ms

(05%) ∗∗∗∗∗∗∗∗∗∗∗∗ POOL2: 0 .0 06 16 04 7 ms

(14%) ∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗ CONV3 + CONV3_ACTIVATION: 0 .0 15 97 1 3 ms

(19%) ∗∗∗∗ ∗∗∗∗∗ ∗∗∗∗∗ ∗∗∗∗∗ ∗∗∗∗∗∗∗∗∗∗∗∗∗∗ ∗∗∗∗∗ ∗∗ FC1 + FC1_ACTIVATION : 0 .0 2 22 24 2 ms

(13%) ∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗∗ FC2 : 0 .0 149 013 ms

(08%) ∗∗∗∗∗∗∗ ∗∗∗∗∗∗∗∗∗∗∗ SOFTMAX: 0 . 01 00 63 3 ms

Average p r o f i l e d tensorRT p r o ce ss time p er s ti m ul u s = 0. 1 13 93 2 ms

3.7.4 CPP_cuDNN export

The cuDNN export can run the generated program in NVIDIA GPU architecture. It use CUDA

and cuDNN library. Compilation features:

Preprocessor command [default value] Description

PROFILING [0]

Compile the binary with a synchronization be-

tween each layers and return the mean execution

time of each layer. This preprocessor option can

decrease performances.

ARCH32 [0]

Compile the binary with the 32-bits architecture

compatibility.

Program options related to the cuDNN export:

Option [default value] Description

-batch [1] Size of the batch to use

-dev [0] CUDA Device ID selection

-stimulus [NULL]

Path to a specific input stimulus to test. For example: -

stimulus

/stimulus/env0000.pgm

command will test the file

env0000.pgm of the stimulus folder.

Test the exported network:

cd export_CPP_cuDNN_float32

make

./bin/n2d2_cudnn_test

3.7.5 C_HLS exportN2D2 IP only

Test the exported network:

cd export_C_HLS_int8

make

./bin/n2d2_test

Run the High-Level Synthesis (HLS) with Xilinx®Vivado®HLS:

vivado_hls -f run_hls.tcl

20/82

4 INI file interface

The INI file interface is the primary way of using N2D2. It is a simple, lightweight and user-friendly

format for specifying a complete DNN-based application, including dataset instanciation, data

pre-processing, neural network layers instanciation and post-processing, with all its hyperparameters.

4.1 Syntax

INI files are simple text files with a basic structure composed of sections, properties and values.

4.1.1 Properties

The basic element contained in an INI file is the property. Every property has a name and a value,

delimited by an equals sign (=). The name appears to the left of the equals sign.

name=value

4.1.2 Sections

Properties may be grouped into arbitrarily named sections. The section name appears on a line

by itself, in square brackets ([ and ]). All properties after the section declaration are associated

with that section. There is no explicit "end of section" delimiter; sections end at the next section

declaration, or the end of the file. Sections may not be nested.

[section]

a=a

b=b

4.1.3 Case sensitivity

Section and property names are case sensitive.

4.1.4 Comments

Semicolons (

;

) or number sign (

#

) at the beginning or in the middle of the line indicate a comment.

Comments are ignored.

; comment text

a=a # comment text

a="a ; not a comment" ; comment text

4.1.5 Quoted values

Values can be quoted, using double quotes. This allows for explicit declaration of whitespace,

and/or for quoting of special characters (equals, semicolon, etc.).

4.1.6 Whitespace

Leading and trailing whitespace on a line are ignored.

4.1.7 Escape characters

A backslash (\) followed immediately by EOL (end-of-line) causes the line break to be ignored.

21/82

4.2 Template inclusion syntax

Is is possible to recursively include templated INI files. For example, the main INI file can include

a templated file like the following:

[inception@inception_model.ini.tpl]

INPUT=layer_x

SIZE=32

ARRAY=2 ; Must be the number of elements in the array

ARRAY[0].P1=Conv

ARRAY[0].P2=32

ARRAY[1].P1=Pool

ARRAY[1].P2=64

If the inception_model.ini.tpl template file content is:

[{{SECTION_NAME}}_layer1]

Input={{INPUT}}

Type=Conv

NbOutputs={{SIZE}}

[{{SECTION_NAME}}_layer2]

Input={{SECTION_NAME}}_layer1

Type=Fc

NbOutputs={{SIZE}}

{% block ARRAY %}

[{{SECTION_NAME}}_array{{#}}]

Prop1=Config{{.P1}}

Prop2={{.P2}}

{% endblock %}

The resulting equivalent content for the main INI file will be:

[inception_layer1]

Input=layer_x

Type=Conv

NbOutputs=32

[inception_layer2]

Input=inception_layer1

Type=Fc

NbOutputs=32

[inception_array0]

Prop1=ConfigConv

Prop2=32

[inception_array1]

Prop1=ConfigPool

Prop2=64

The

SECTION_NAME

template parameter is automatically generated from the name of the including

section (before @).

4.2.1 Variable substitution

{{VAR}} is replaced by the value of the VAR template parameter.

4.2.2 Control statements

Control statements are between {% and %} delimiters.

22/82

block {%block ARRAY %} ... {%endblock %}

The

#

template parameter is automatically generated from the

{%block ... %}

template control

statement and corresponds to the current item position, starting from 0.

for {%for VAR in range([START, ]END])%} ... {%endfor %}

If START is not specified, the loop begins at 0 (first value of VAR). The last value of VAR is END-1.

if {%if VAR OP [VALUE] %} ... [{%else %}] ... {%endif %}

OP may be ==,!=,exists or not_exists.

include {%include FILENAME %}

4.3 Global parameters

Option [default value] Description

DefaultModel [Transcode]

Default layers model. Can be

Frame

,

Frame_CUDA

,

Transcode

or

Spike

DefaultDataType [Float32]

Default layers data type. Can be

Float16

,

Float32

or

Float64

SignalsDiscretization [0] Number of levels for signal discretization

FreeParametersDiscretization

[0]

Number of levels for weights discretization

4.4 Databases

The tool integrates pre-defined modules for several well-known database used in the deep learning

community, such as MNIST, GTSRB, CIFAR10 and so on. That way, no extra step is necessary to

be able to directly build a network and learn it on these database.

4.4.1 MNIST

MNIST (LeCun et al.,1998) is already fractionned into a learning set and a testing set, with:

•60,000 digits in the learning set;

•10,000 digits in the testing set.

Example:

[database]

Type=MNIST_IDX_Database

Validation=0.2 ; Fraction of learning stimuli used for the validation [default: 0.0]

Option [default value] Description

Validation [0.0] Fraction of the learning set used for validation

DataPath Path to the database

[$N2D2_DATA/mnist]

4.4.2 GTSRB

GTSRB (Stallkamp et al.,2012) is already fractionned into a learning set and a testing set, with:

•39,209 digits in the learning set;

•12,630 digits in the testing set.

Example:

23/82

[database]

Type=GTSRB_DIR_Database

Validation=0.2 ; Fraction of learning stimuli used for the validation [default: 0.0]

Option [default value] Description

Validation [0.0] Fraction of the learning set used for validation

DataPath Path to the database

[$N2D2_DATA/GTSRB]

4.4.3 Directory

Hand made database stored in files directories are directly supported with the

DIR_Database

module.

For example, suppose your database is organized as following (in the path specified in the

N2D2_DATA

environment variable):

•GST/airplanes: 800 images

•GST/car_side: 123 images

•GST/Faces: 435 images

•GST/Motorbikes: 798 images

You can then instanciate this database as input of your neural network using the following

parameters:

[database]

Type=DIR_Database

DataPath=${N2D2_DATA}/GST

Learn=0.4 ; 40% of images of the smallest category = 49 (0.4x123) images for each category will be

used for learning

Validation=0.2 ; 20% of images of the smallest category = 25 (0.2x123) images for each category

will be used for validation

; the remaining images will be used for testing

Each subdirectory will be treated as a different label, so there will be 4 different labels, named

after the directory name.

The stimuli are equi-partitioned for the learning set and the validation set, meaning that the

same number of stimuli for each category is used. If the learn fraction is 0.4 and the validation

fraction is 0.2, as in the example above, the partitioning will be the following:

Label ID Label name Learn set Validation set Test set

0airplanes 49 25 726

1car_side 49 25 49

2Faces 49 25 361

3Motorbikes 49 25 724

Total: 196 100 1860

Mandatory option

Option [default value] Description

DataPath Path to the root stimuli directory

Learn

If

PerLabelPartitioning

is true, fraction of images used for

the learning; else, number of images used for the learning,

regardless of their labels

LoadInMemory [0] Load the whole database into memory

Depth [1] Number of sub-directory levels to include. Examples:

24/82

Depth

= 0: load stimuli only from the current directory

(DataPath)

Depth

= 1: load stimuli from

DataPath

and stimuli contained

in the sub-directories of DataPath

Depth

< 0: load stimuli recursively from

DataPath

and all its

sub-directories

LabelName [] Base stimuli label name

LabelDepth [1]

Number of sub-directory name levels used to form the stimuli

labels. Examples:

LabelDepth = -1: no label for all stimuli (label ID = -1)

LabelDepth = 0: uses LabelName for all stimuli

LabelDepth

= 1: uses

LabelName

for stimuli in the current

directory (

DataPath

) and

LabelName

/sub-directory name for

stimuli in the sub-directories

PerLabelPartitioning [1]

If true, the stimuli are equi-partitioned for the learn/valida-

tion/test sets, meaning that the same number of stimuli for

each label is used

Validation [0.0]

If

PerLabelPartitioning

is true, fraction of images used for the

validation; else, number of images used for the validation,

regardless of their labels

Test [1.0-Learn-Validation]

If

PerLabelPartitioning

is true, fraction of images used for the

test; else, number of images used for the test, regardless of

their labels

ValidExtensions []

List of space-separated valid stimulus file extensions (if left

empty, any file extension is considered a valid stimulus)

LoadMore []

Name of an other section with the same options to load a

different DataPath

ROIFile []

File containing the stimuli ROIs. If a ROI file is specified,

LabelDepth should be set to -1

DefaultLabel []

Label name for pixels outside any ROI (default is no label,

pixels are ignored)

ROIsMargin [0]

Number of pixels around ROIs that are ignored (and not

considered as DefaultLabel pixels)

To load and partition more than one DataPath, one can use the LoadMore option:

[database]

Type=DIR_Database

DataPath=${N2D2_DATA}/GST

Learn=0.6

Validation=0.4

LoadMore=database.test

; Load stimuli from the "GST_Test" path in the test dataset

[database.test]

DataPath=${N2D2_DATA}/GST_Test

Learn=0.0

Test=1.0

; The LoadMore option is recursive:

; LoadMore=database.more

; [database.more]

; Load even more data here

25/82

4.4.4 Other built-in databases

CIFAR10_Database CIFAR10 database (Krizhevsky,2009).

Option [default value] Description

Validation [0.0] Fraction of the learning set used for validation

DataPath Path to the database

[

$N2D2_DATA

/cifar-10-batches-

bin]

CIFAR100_Database CIFAR100 database (Krizhevsky,2009).

Option [default value] Description

Validation [0.0] Fraction of the learning set used for validation

UseCoarse [0] If true, use the coarse labeling (10 labels instead of 100)

DataPath Path to the database

[$N2D2_DATA/cifar-100-binary]

CKP_Database

The Extended Cohn-Kanade (CK+) database for expression recognition (Lucey

et al.,2010).

Option [default value] Description

Learn Fraction of images used for the learning

Validation [0.0] Fraction of images used for the validation

DataPath Path to the database

[

$N2D2_DATA

/cohn-kanade-

images]

Caltech101_DIR_Database Caltech 101 database (Fei-Fei et al.,2004).

Option [default value] Description

Learn Fraction of images used for the learning

Validation [0.0] Fraction of images used for the validation

IncClutter [0]

If true, includes the BACKGROUND_Google directory of

the database

DataPath Path to the database

[$N2D2_DATA/

101_ObjectCategories]

Caltech256_DIR_Database Caltech 256 database (Griffin et al.,2007).

Option [default value] Description

Learn Fraction of images used for the learning

Validation [0.0] Fraction of images used for the validation

IncClutter [0]

If true, includes the BACKGROUND_Google directory of

the database

DataPath Path to the database

[$N2D2_DATA/

256_ObjectCategories]

26/82

CaltechPedestrian_Database Caltech Pedestrian database (Dollár et al.,2009).

Note that the images and annotations must first be extracted from the seq video data located in

the videos directory using the

dbExtract.m

Matlab tool provided in the "Matlab evaluation/labeling

code" downloadable on the dataset website.

Assuming the following directory structure (in the path specified in the

N2D2_DATA

environment

variable):

•CaltechPedestrians/data-USA/videos/... (from the setxx.tar files)

•CaltechPedestrians/data-USA/annotations/... (from the setxx.tar files)

•CaltechPedestrians/tools/piotr_toolbox/toolbox (from the Piotr’s Matlab Toolbox archive)

•CaltechPedestrians/*.m including dbExtract.m (from the Matlab evaluation/labeling code)

Use the following command in Matlab to generate the images and annotations:

cd([getenv(’N2D2_DATA’)’/CaltechPedestrians’])

addpath(genpath(’tools/piotr_toolbox/toolbox’)) % add the Piotr’s Matlab Toolbox in the Matlab

path

dbInfo(’USA’)

dbExtract()

Option [default value] Description

Validation [0.0] Fraction of the learning set used for validation

SingleLabel [1] Use the same label for "person" and "people" bounding box

IncAmbiguous [0]

Include ambiguous bounding box labeled "person?" using the

same label as "person"

DataPath Path to the database images

[$N2D2_DATA/

CaltechPedestrians/data-

USA/images]

LabelPath Path to the database annotations

[$N2D2_DATA/

CaltechPedestrians/data-

USA/annotations]

Cityscapes_Database Cityscapes database (Cordts et al.,2016).

Option [default value] Description

IncTrainExtra [0]

If true, includes the left 8-bit images - trainextra set (19,998

images)

UseCoarse [0]

If true, only use coarse annotations (which are the only

annotations available for the trainextra set)

SingleInstanceLabels [1]

If true, convert group labels to single instance labels (for

example, cargroup becomes car)

DataPath Path to the database images

[$N2D2_DATA/

Cityscapes/leftImg8bit] or

[

$CITYSCAPES_DATASET

] if defined

LabelPath []

Path to the database annotations (deduced from

DataPath

if

left empty)

Daimler_Database Daimler Monocular Pedestrian Detection Benchmark (Daimler Pedestrian).

27/82

Option [default value] Description

Learn [1.0] Fraction of images used for the learning

Validation [0.0] Fraction of images used for the validation

Test [0.0] Fraction of images used for the test

Fully [0]

When activate it use the test dataset to learn. Use only on

fully-cnn mode

DOTA_Database DOTA database (Xia et al.,2017).

Option [default value] Description

Learn Fraction of images used for the learning

DataPath Path to the database

[$N2D2_DATA/DOTA]

LabelPath Path to the database labels list file

[]

FDDB_Database

Face Detection Data Set and Benchmark (FDDB) (Jain and Learned-Miller,

2010).

Option [default value] Description

Learn Fraction of images used for the learning

Validation [0.0] Fraction of images used for the validation

DataPath Path to the images (decompressed originalPics.tar.gz)

[$N2D2_DATA/FDDB]

LabelPath Path to the annotations (decompressed FDDB-folds.tgz)

[$N2D2_DATA/FDDB]

GTSDB_DIR_Database GTSDB database (Houben et al.,2013).

Option [default value] Description

Learn Fraction of images used for the learning

Validation [0.0] Fraction of images used for the validation

DataPath Path to the database

[$N2D2_DATA/FullIJCNN2013]

ILSVRC2012_Database ILSVRC2012 database (Russakovsky et al.,2015).

Option [default value] Description

Learn Fraction of images used for the learning

DataPath Path to the database

[$N2D2_DATA/ILSVRC2012]

LabelPath Path to the database labels list file

[

$N2D2_DATA

/ILSVRC2012/synsets.txt]

KITTI_Database

The KITTI Database provide ROI which can be use for autonomous driving and

environment perception. The database provide 8 labeled different classes. Utilization of the KITTI

Database is under licensing conditions and request an email registration. To install it you have to

follow this link:

http://www.cvlibs.net/datasets/kitti/eval_tracking.php

and download

the left color images (15 GB) and the trainling labels of tracking data set (9 MB). Extract the

downloaded archives in your $N2D2_DATA/KITTI folder.

28/82

Option [default value] Description

Learn [0.8] Fraction of images used for the learning

Validation [0.2] Fraction of images used for the validation

KITTI_Road_Database

The KITTI Road Database provide ROI which can be used to road

segmentation. The dataset provide 1 labeled class (road) on 289 training images. The 290 test

images are not labeled. Utilization of the KITTI Road Database is under licensing conditions and

request an email registration. To install it you have to follow this link:

http://www.cvlibs.net/

datasets/kitti/eval_road.php

and download the "base kit" of (0.5 GB) with left color images,

calibration and training labels. Extract the downloaded archive in your

$N2D2_DATA/KITTI

folder.

Option [default value] Description

Learn [0.8] Fraction of images used for the learning

Validation [0.2] Fraction of images used for the validation

KITTI_Object_Database

The KITTI Object Database provide ROI which can be use for au-

tonomous driving and environment perception. The database provide 8 labeled different classes

on 7481 training images. The 7518 test images are not labeled. The whole database pro-

vide 80256 labeled objects. Utilization of the KITTI Object Database is under licensing con-

ditions and request an email registration. To install it you have to follow this link:

http:

//www.cvlibs.net/datasets/kitti/eval_object.php?obj_benchmark

and download the "lef

color images" (12 GB) and the training labels of object data set (5 MB). Extract the downloaded

archives in your $N2D2_DATA/KITTI_Object folder.

Option [default value] Description

Learn [0.8] Fraction of images used for the learning

Validation [0.2] Fraction of images used for the validation

LITISRouen_Database LITIS Rouen audio scene dataset (Rakotomamonjy and Gasso,2014).

Option [default value] Description

Learn [0.4] Fraction of images used for the learning

Validation [0.4] Fraction of images used for the validation

DataPath Path to the database

[$N2D2_DATA/data_rouen]

4.4.5 Dataset images slicing

It is possible to automatically slice images from a dataset, with a given slice size and stride, using

the .slicing attribute. This effectively increases the number of stimuli in the set.

[database.slicing]

ApplyTo=NoLearn

Width=2048

Height=1024

StrideX=2048

StrideY=1024

RandomShuffle=1 ; 1 is the default value

The

RandomShuffle

option, enabled by default, randomly shuffle the dataset after slicing. If

disabled, the slices are added in order at the end of the dataset.

29/82

4.5 Stimuli data analysis

You can enable stimuli data reporting with the following section (the name of the section must

start with env.StimuliData):

[env.StimuliData-raw]

ApplyTo=LearnOnly

LogSizeRange=1

LogValueRange=1

The stimuli data reported for the full MNIST learning set will look like:

env . StimuliData−raw dat a :

Number of s t i m u l i : 60000

Data w i d t h r a n ge : [ 2 8 , 2 8 ]

Data h e i g h t r an ge : [ 2 8 , 2 8]

Data c h a n n e l s r an ge : [ 1 , 1 ]

Val ue r a ng e : [ 0 , 2 5 5 ]

Value mean : 3 3. 3 18 4

Value s t d . dev . : 78 .5 675

4.5.1 Zero-mean and unity standard deviation normalization

It it possible to normalize the whole database to have zero mean and unity standard deviation on

the learning set using a RangeAffineTransformation transformation:

; Stimuli normalization based on learning set global mean and std.dev.

[env.Transformation-normalize]

Type=RangeAffineTransformation

FirstOperator=Minus

FirstValue=[env.StimuliData-raw]_GlobalValue.mean

SecondOperator=Divides

SecondValue=[env.StimuliData-raw]_GlobalValue.stdDev

The variables

_GlobalValue.mean

and

_GlobalValue.stdDev

are automatically generated in the

[env.

StimuliData-raw]

block. Thanks to this facility, unknown and arbitrary database can be analysed

and normalized in one single step without requiring any external data manipulation.

After normalization, the stimuli data reported is:

env . StimuliData−n o r m a l i z e d d a t a :

Number of s t i m u l i : 60000

Data w i d t h r a n ge : [ 2 8 , 2 8 ]

Data h e i g h t r an ge : [ 2 8 , 2 8]

Data c h a n n e l s r an ge : [ 1 , 1 ]

Value ra ng e : [ −0 .424 074 , 2 . 8 2 1 5 4 ]

Value mean : 2 . 64 79 6 e−07

Value s t d . dev . : 1

Where we can check that the global mean is close to 0 and the standard deviation is 1 on the

whole dataset. The result of the transformation on the first images of the set can be checked in the

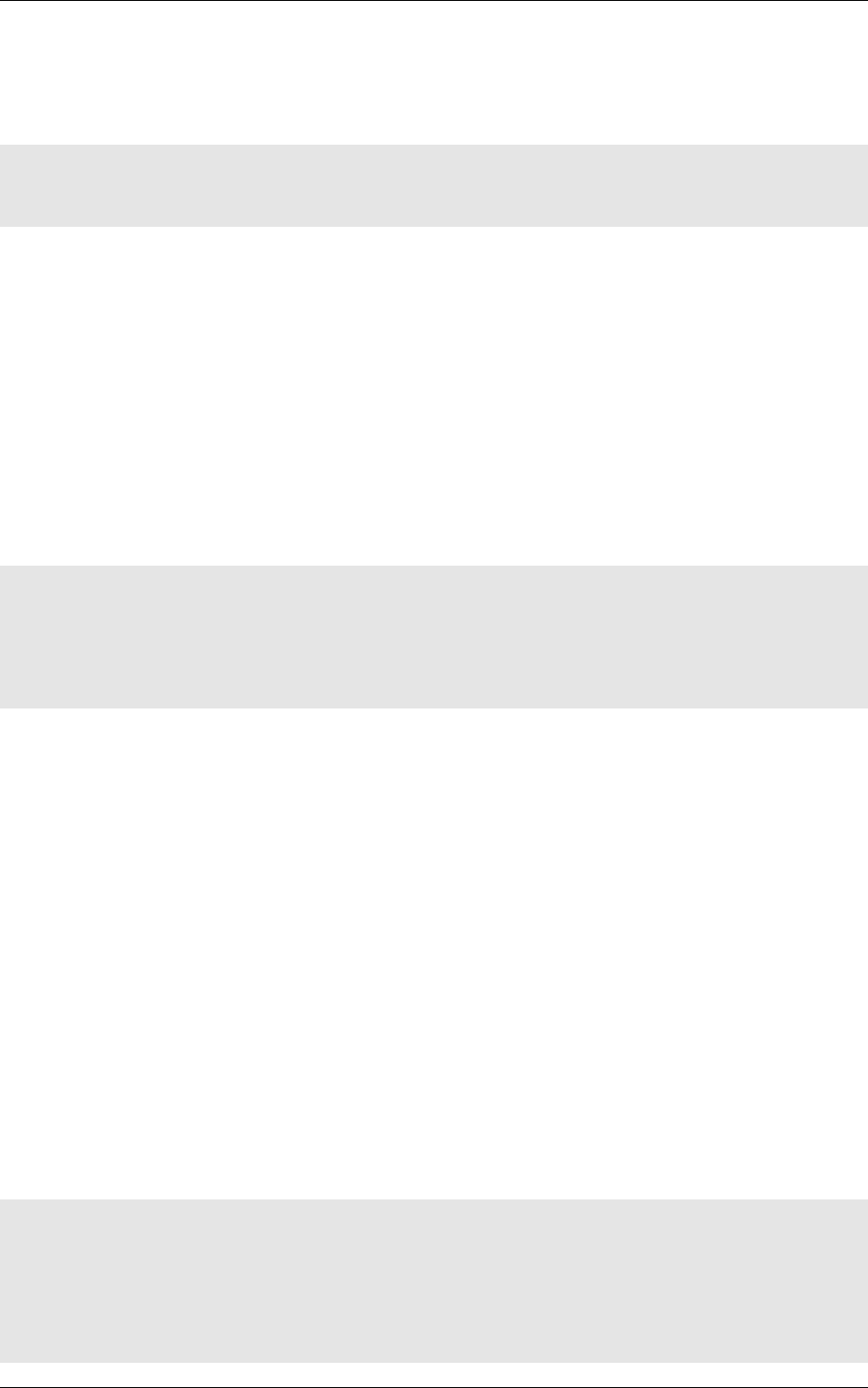

generated frames folder, as shown in figure 9.

4.5.2 Substracting the mean image of the set

Using the

StimuliData

object followed with an

AffineTransformation

, it is also possible to use the

mean image of the dataset to normalize the data:

[env.StimuliData-meanData]

ApplyTo=LearnOnly

MeanData=1 ; Provides the _MeanData parameter used in the transformation

[env.Transformation]

Type=AffineTransformation

FirstOperator=Minus

FirstValue=[env.StimuliData-meanData]_MeanData

30/82

Figure 9: Image of the set after normalization.

The resulting global mean image can be visualized in env.StimuliData-meanData/meanData.bin.png

an is shown in figure 10.

Figure 10: Global mean image generated by StimuliData with the MeanData parameter enabled.

After this transformation, the reported stimuli data becomes:

env . StimuliData−p r o c e s s e d d a ta :

31/82

Number of s t i m u l i : 60000

Data w i d t h r a n ge : [ 2 8 , 2 8 ]

Data h e i g h t r an ge : [ 2 8 , 2 8]

Data c h a n n e l s r an ge : [ 1 , 1 ]

Value ra ng e : [ −1 39.5 54 , 2 5 4 . 9 7 9 ]

Value mean : −3.45583 e −08

Value s t d . dev . : 66 .1 288

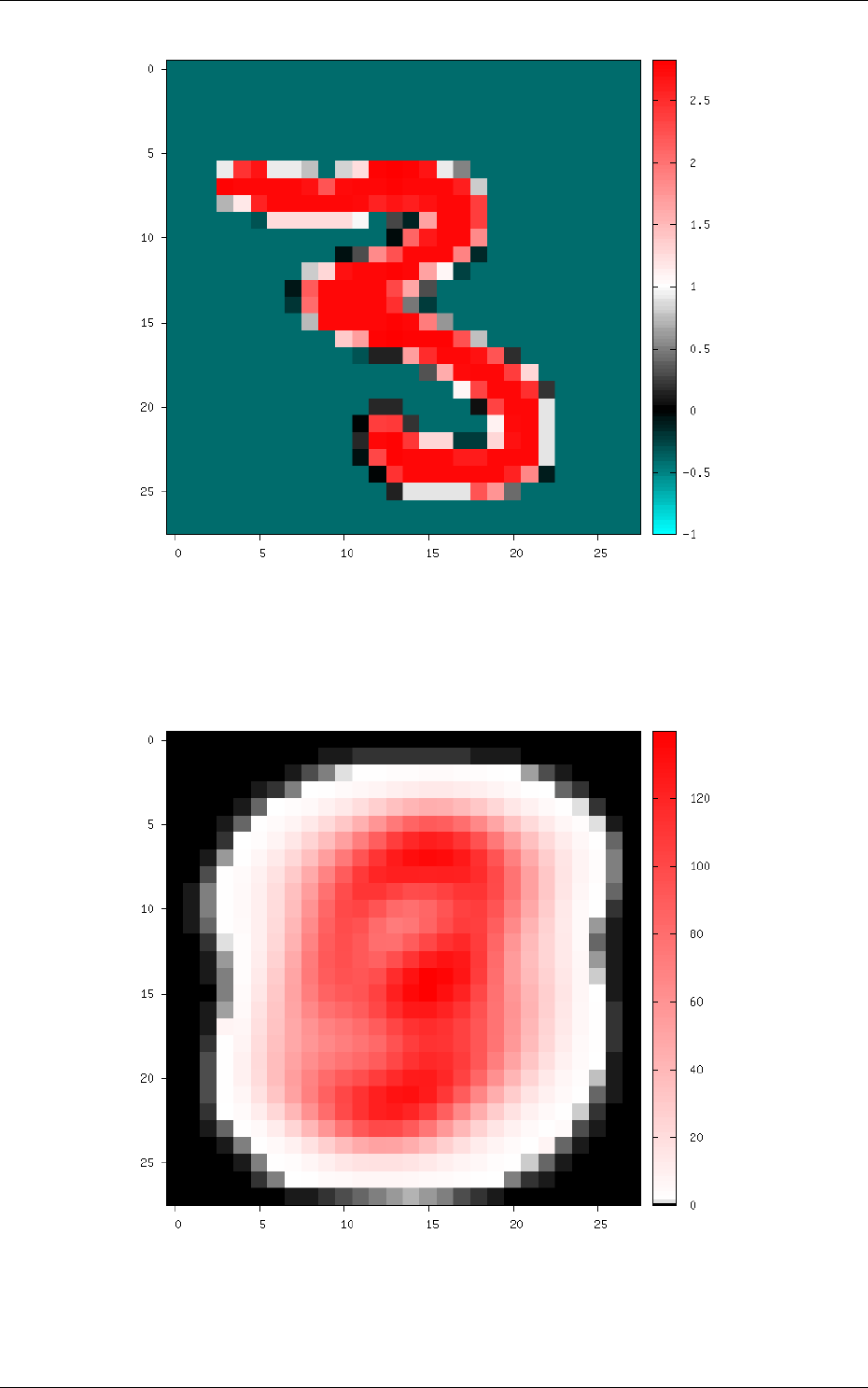

The result of the transformation on the first images of the set can be checked in the generated

frames folder, as shown in figure 11.

Figure 11: Image of the set after the

AffineTransformation

substracting the global mean image (keep in

mind that the original image value range is [0, 255]).

4.6 Environment

The environment simply specify the input data format of the network (width, height and batch

size). Example:

[env]

SizeX=24

SizeY=24

BatchSize=12 ; [default: 1]

Option [default value] Description

SizeX Environment width

SizeY Environment height

NbChannels [1]

Number of channels (applicable only if there is no

env.

ChannelTransformation[...])

BatchSize [1] Batch size

CompositeStimuli [0] If true, use pixel-wise stimuli labels

CachePath [] Stimuli cache path (no cache if left empty)

32/82

StimulusType [SingleBurst]

Method for converting stimuli into spike trains. Can be any

of SingleBurst,Periodic,JitteredPeriodic or Poissonian

DiscardedLateStimuli [1.0]

The pixels in the pre-processed stimuli with a value above

this limit never generate spiking events

PeriodMeanMin [50 TimeMs]

Mean minimum period

Tmin

, used for periodic temporal cod-

ings, corresponding to pixels in the pre-processed stimuli with

a value of 0 (which are supposed to be the most significant

pixels)

PeriodMeanMax [12 TimeS]

Mean maximum period

Tmax

, used for periodic temporal

codings, corresponding to pixels in the pre-processed stimuli

with a value of 1 (which are supposed to be the least signifi-

cant pixels). This maximum period may be never reached if

DiscardedLateStimuli is lower than 1.0

PeriodRelStdDev [0.1]

Relative standard deviation, used for periodic temporal cod-

ings, applied to the spiking period of a pixel

PeriodMin [11 TimeMs]

Absolute minimum period, or spiking interval, used for peri-

odic temporal codings, for any pixel

4.6.1 Built-in transformations

There are 6 possible categories of transformations:

•env.Transformation[...]

Transformations applied to the input images before channels creation;

•env.OnTheFlyTransformation[...]

On-the-fly transformations applied to the input images before

channels creation;

•env.ChannelTransformation[...] Create or add transformation for a specific channel;

•env.ChannelOnTheFlyTransformation[...]

Create or add on-the-fly transformation for a specific

channel;

•env.ChannelsTransformation[...]

Transformations applied to all the channels of the input

images;

•env.ChannelsOnTheFlyTransformation[...]

On-the-fly transformations applied to all the channels

of the input images.

Example:

[env.Transformation]

Type=PadCropTransformation

Width=24

Height=24

Several transformations can applied successively. In this case, to be able to apply multiple

transformations of the same category, a different suffix (

[...]

) must be added to each transformation.

The transformations will be processed in the order of appearance in the INI file

regardless of their suffix.

Common set of parameters for any kind of transformation:

Option [default value] Description

ApplyTo [All]

Apply the transformation only to the specified stimuli sets.

Can be:

LearnOnly: learning set only

ValidationOnly: validation set only

TestOnly: testing set only

33/82

NoLearn: validation and testing sets only

NoValidation: learning and testing sets only

NoTest: learning and validation sets only

All: all sets (default)

Example:

[env.Transformation-1]

Type=ChannelExtractionTransformation

CSChannel=Gray

[env.Transformation-2]

Type=RescaleTransformation

Width=29

Height=29

[env.Transformation-3]

Type=EqualizeTransformation

[env.OnTheFlyTransformation]

Type=DistortionTransformation

ApplyTo=LearnOnly ; Apply this transformation for the Learning set only

ElasticGaussianSize=21

ElasticSigma=6.0

ElasticScaling=20.0

Scaling=15.0

Rotation=15.0

List of available transformations:

AffineTransformation

Apply an element-wise affine transformation to the image with matrixes

of the same size.

Option [default value] Description

FirstOperator

First element-wise operator, can be

Plus

,

Minus

,

Multiplies

,

Divides

FirstValue First matrix file name

SecondOperator [Plus]

Second element-wise operator, can be

Plus

,

Minus

,

Multiplies

,

Divides

SecondValue [] Second matrix file name

The final operation is the following, with

A

the image matrix,

B1st

,

B2nd

the matrixes to

add/substract/multiply/divide and the element-wise operator :

f(A) = A

op1st B1st

op2nd B2nd

ApodizationTransformation Apply an apodization window to each data row.

Option [default value] Description

Size

Window total size (must match the number of data columns)

WindowName [Rectangular] Window name. Possible values are:

Rectangular: Rectangular

Hann: Hann

Hamming: Hamming

Cosine: Cosine

Gaussian: Gaussian

Blackman: Blackman

Kaiser: Kaiser

34/82

Gaussian window Gaussian window.

Option [default value] Description

WindowName

.Sigma

[0.4] Sigma

Blackman window Blackman window.

Option [default value] Description

WindowName

.Alpha

[0.16] Alpha

Kaiser window Kaiser window.

Option [default value] Description

WindowName

.Beta

[5.0]

Beta

ChannelExtractionTransformation Extract an image channel.

Option Description

CSChannel Blue

: blue channel in the BGR colorspace, or first channel of

any colorspace

Green

: green channel in the BGR colorspace, or second chan-

nel of any colorspace

Red

: red channel in the BGR colorspace, or third channel of

any colorspace

Hue: hue channel in the HSV colorspace

Saturation: saturation channel in the HSV colorspace

Value: value channel in the HSV colorspace

Gray: gray conversion

Y: Y channel in the YCbCr colorspace

Cb: Cb channel in the YCbCr colorspace

Cr: Cr channel in the YCbCr colorspace

ColorSpaceTransformation Change the current image colorspace.

35/82

Option Description

ColorSpace BGR: convert any gray, BGR or BGRA image to BGR

RGB: convert any gray, BGR or BGRA image to RGB

HSV: convert BGR image to HSV

HLS: convert BGR image to HLS

YCrCb: convert BGR image to YCrCb

CIELab: convert BGR image to CIELab

CIELuv: convert BGR image to CIELuv

RGB_to_BGR: convert RGB image to BGR

RGB_to_HSV: convert RGB image to HSV

RGB_to_HLS: convert RGB image to HLS

RGB_to_YCrCb: convert RGB image to YCrCb

RGB_to_CIELab: convert RGB image to CIELab

RGB_to_CIELuv: convert RGB image to CIELuv

HSV_to_BGR: convert HSV image to BGR

HSV_to_RGB: convert HSV image to RGB

HLS_to_BGR: convert HLS image to BGR

HLS_to_RGB: convert HLS image to RGB

YCrCb_to_BGR: convert YCrCb image to BGR

YCrCb_to_RGB: convert YCrCb image to RGB

CIELab_to_BGR: convert CIELab image to BGR

CIELab_to_RGB: convert CIELab image to RGB

CIELuv_to_BGR: convert CIELuv image to BGR

CIELuv_to_RGB: convert CIELuv image to RGB

Note that the default colorspace in N2D2 is BGR, the same as in OpenCV.

DFTTransformation

Apply a DFT to the data. The input data must be single channel, the

resulting data is two channels, the first for the real part and the second for the imaginary part.

Option [default value] Description

TwoDimensional [1]

If true, compute a 2D image DFT. Otherwise, compute the

1D DFT of each data row

Note that this transformation can add zero-padding if required by the underlying FFT imple-

mentation.

DistortionTransformationN2D2 IP only

Apply elastic distortion to the image. This transformation is gener-

ally used on-the-fly (so that a different distortion is performed for each image), and for the learning

only.

Option [default value] Description

ElasticGaussianSize [15] Size of the gaussian for elastic distortion (in pixels)

ElasticSigma [6.0] Sigma of the gaussian for elastic distortion

ElasticScaling [0.0] Scaling of the gaussian for elastic distortion

Scaling [0.0] Maximum random scaling amplitude (+/-, in percentage)

Rotation [0.0] Maximum random rotation amplitude (+/-, in °)

EqualizeTransformationN2D2 IP only Image histogram equalization.

36/82

Option [default value] Description

Method [Standard]Standard: standard histogram equalization

CLAHE: contrast limited adaptive histogram equalization

CLAHE_ClipLimit [40.0] Threshold for contrast limiting (for CLAHE only)

CLAHE_GridSize [8]

Size of grid for histogram equalization (for

CLAHE

only). Input

image will be divided into equally sized rectangular tiles. This

parameter defines the number of tiles in row and column.

ExpandLabelTransformationN2D2 IP only Expand single image label (1x1 pixel) to full frame label.

FilterTransformation Apply a convolution filter to the image.

Option [default value] Description

Kernel Convolution kernel. Possible values are:

*: custom kernel

Gaussian: Gaussian kernel

LoG: Laplacian Of Gaussian kernel

DoG: Difference Of Gaussian kernel

Gabor: Gabor kernel

*kernel Custom kernel.

Option Description

Kernel.SizeX [0] Width of the kernel (numer of columns)

Kernel.SizeY [0] Height of the kernel (number of rows)

Kernel.Mat

List of row-major ordered coefficients of

the kernel

If both Kernel.SizeX and Kernel.SizeY are 0, the kernel is assumed to be square.

Gaussian kernel Gaussian kernel.

Option [default value] Description

Kernel.SizeX Width of the kernel (numer of columns)

Kernel.SizeY Height of the kernel (number of rows)

Kernel.Positive [1]

If true, the center of the kernel is positive

Kernel.Sigma [√2.0]Sigma of the kernel

LoG kernel Laplacian Of Gaussian kernel.

Option [default value] Description

Kernel.SizeX Width of the kernel (numer of columns)

Kernel.SizeY Height of the kernel (number of rows)

Kernel.Positive [1]

If true, the center of the kernel is positive

Kernel.Sigma [√2.0]Sigma of the kernel

DoG kernel Difference Of Gaussian kernel kernel.

Option [default value] Description

Kernel.SizeX Width of the kernel (numer of columns)

Kernel.SizeY Height of the kernel (number of rows)

Kernel.Positive [1]

If true, the center of the kernel is positive

Kernel.Sigma1 [2.0] Sigma1 of the kernel

Kernel.Sigma2 [1.0] Sigma2 of the kernel