GSPTG Performance Tuning Guide

performance-tuning-guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 90

- Contents

- Preface

- 1 Overview of GlassFish Server Performance Tuning

- 2 Tuning Your Application

- Java Programming Guidelines

- Avoid Serialization and Deserialization

- Use StringBuilder to Concatenate Strings

- Assign null to Variables That Are No Longer Needed

- Declare Methods as final Only If Necessary

- Declare Constants as static final

- Avoid Finalizers

- Declare Method Arguments final

- Synchronize Only When Necessary

- Use DataHandlers for SOAP Attachments

- Java Server Page and Servlet Tuning

- EJB Performance Tuning

- Java Programming Guidelines

- 3 Tuning the GlassFish Server

- Using the GlassFish Server Performance Tuner

- Deployment Settings

- Logger Settings

- Web Container Settings

- EJB Container Settings

- Java Message Service Settings

- Transaction Service Settings

- HTTP Service Settings

- Network Listener Settings

- Transport Settings

- Thread Pool Settings

- ORB Settings

- Resource Settings

- Load Balancer Settings

- 4 Tuning the Java Runtime System

- 5 Tuning the Operating System and Platform

GlassFish Server Open Source Edition

Performance Tuning Guide

Release 4.0

May 2013

This book describes how to get the best performance with

GlassFish Server 4.0.

GlassFish Server Open Source Edition Performance Tuning Guide, Release 4.0

Copyright © 2013, Oracle and/or its affiliates. All rights reserved.

This software and related documentation are provided under a license agreement containing restrictions on

use and disclosure and are protected by intellectual property laws. Except as expressly permitted in your

license agreement or allowed by law, you may not use, copy, reproduce, translate, broadcast, modify,

license, transmit, distribute, exhibit, perform, publish, or display any part, in any form, or by any means.

Reverse engineering, disassembly, or decompilation of this software, unless required by law for

interoperability, is prohibited.

The information contained herein is subject to change without notice and is not warranted to be error-free. If

you find any errors, please report them to us in writing.

If this is software or related documentation that is delivered to the U.S. Government or anyone licensing it

on behalf of the U.S. Government, the following notice is applicable:

U.S. GOVERNMENT RIGHTS Programs, software, databases, and related documentation and technical data

delivered to U.S. Government customers are "commercial computer software" or "commercial technical

data" pursuant to the applicable Federal Acquisition Regulation and agency-specific supplemental

regulations. As such, the use, duplication, disclosure, modification, and adaptation shall be subject to the

restrictions and license terms set forth in the applicable Government contract, and, to the extent applicable

by the terms of the Government contract, the additional rights set forth in FAR 52.227-19, Commercial

Computer Software License (December 2007). Oracle America, Inc., 500 Oracle Parkway, Redwood City, CA

94065.

This software or hardware is developed for general use in a variety of information management

applications. It is not developed or intended for use in any inherently dangerous applications, including

applications that may create a risk of personal injury. If you use this software or hardware in dangerous

applications, then you shall be responsible to take all appropriate fail-safe, backup, redundancy, and other

measures to ensure its safe use. Oracle Corporation and its affiliates disclaim any liability for any damages

caused by use of this software or hardware in dangerous applications.

Oracle and Java are registered trademarks of Oracle and/or its affiliates. Other names may be trademarks of

their respective owners.

Intel and Intel Xeon are trademarks or registered trademarks of Intel Corporation. All SPARC trademarks

are used under license and are trademarks or registered trademarks of SPARC International, Inc. AMD,

Opteron, the AMD logo, and the AMD Opteron logo are trademarks or registered trademarks of Advanced

Micro Devices. UNIX is a registered trademark of The Open Group.

This software or hardware and documentation may provide access to or information on content, products,

and services from third parties. Oracle Corporation and its affiliates are not responsible for and expressly

disclaim all warranties of any kind with respect to third-party content, products, and services. Oracle

Corporation and its affiliates will not be responsible for any loss, costs, or damages incurred due to your

access to or use of third-party content, products, or services.

iii

Contents

Preface ................................................................................................................................................................. ix

1 Overview of GlassFish Server Performance Tuning

Process Overview ..................................................................................................................................... 1-1

Performance Tuning Sequence......................................................................................................... 1-2

Understanding Operational Requirements......................................................................................... 1-2

Application Architecture .................................................................................................................. 1-2

Security Requirements....................................................................................................................... 1-3

High Availability Clustering, Load Balancing, and Failover ...................................................... 1-5

Hardware Resources.......................................................................................................................... 1-5

Administration ................................................................................................................................... 1-6

General Tuning Concepts ....................................................................................................................... 1-6

Capacity Planning.............................................................................................................................. 1-7

User Expectations............................................................................................................................... 1-8

Further Information ................................................................................................................................. 1-8

2 Tuning Your Application

Java Programming Guidelines............................................................................................................... 2-1

Avoid Serialization and Deserialization......................................................................................... 2-1

Use

StringBuilder

to Concatenate Strings ................................................................................... 2-2

Assign null to Variables That Are No Longer Needed ................................................................ 2-2

Declare Methods as final Only If Necessary .................................................................................. 2-2

Declare Constants as static final....................................................................................................... 2-2

Avoid Finalizers ................................................................................................................................. 2-3

Declare Method Arguments final .................................................................................................... 2-3

Synchronize Only When Necessary ................................................................................................ 2-3

Use DataHandlers for SOAP Attachments..................................................................................... 2-3

Java Server Page and Servlet Tuning.................................................................................................... 2-3

Suggested Coding Practices.............................................................................................................. 2-4

EJB Performance Tuning ......................................................................................................................... 2-5

Goals..................................................................................................................................................... 2-6

Monitoring EJB Components ........................................................................................................... 2-6

General Guidelines ............................................................................................................................ 2-8

Using Local and Remote Interfaces .............................................................................................. 2-10

Improving Performance of EJB Transactions.............................................................................. 2-11

iv

Using Special Techniques .............................................................................................................. 2-12

Tuning Tips for Specific Types of EJB Components .................................................................. 2-15

JDBC and Database Access............................................................................................................ 2-18

Tuning Message-Driven Beans ..................................................................................................... 2-19

3 Tuning the GlassFish Server

Using the GlassFish Server Performance Tuner................................................................................. 3-1

Deployment Settings ............................................................................................................................... 3-2

Disable Auto-Deployment................................................................................................................ 3-2

Use Pre-compiled JavaServer Pages................................................................................................ 3-2

Disable Dynamic Application Reloading ....................................................................................... 3-3

Logger Settings ......................................................................................................................................... 3-3

General Settings.................................................................................................................................. 3-3

Log Levels ........................................................................................................................................... 3-3

Web Container Settings........................................................................................................................... 3-3

Session Properties: Session Timeout ............................................................................................... 3-3

Manager Properties: Reap Interval.................................................................................................. 3-4

Disable Dynamic JSP Reloading ...................................................................................................... 3-4

EJB Container Settings ............................................................................................................................ 3-4

Monitoring the EJB Container.......................................................................................................... 3-5

Tuning the EJB Container ................................................................................................................. 3-5

Java Message Service Settings ............................................................................................................... 3-9

Transaction Service Settings................................................................................................................... 3-9

Monitoring the Transaction Service ................................................................................................ 3-9

Tuning the Transaction Service..................................................................................................... 3-10

HTTP Service Settings.......................................................................................................................... 3-11

Monitoring the HTTP Service........................................................................................................ 3-11

HTTP Service Access Logging....................................................................................................... 3-14

Network Listener Settings................................................................................................................... 3-14

General Settings............................................................................................................................... 3-14

HTTP Settings.................................................................................................................................. 3-15

File Cache Settings .......................................................................................................................... 3-16

Transport Settings ................................................................................................................................. 3-17

Thread Pool Settings............................................................................................................................. 3-17

Max Thread Pool Size..................................................................................................................... 3-17

Min Thread Pool Size...................................................................................................................... 3-18

ORB Settings .......................................................................................................................................... 3-18

Overview.......................................................................................................................................... 3-18

How a Client Connects to the ORB .............................................................................................. 3-18

Monitoring the ORB........................................................................................................................ 3-19

Tuning the ORB............................................................................................................................... 3-19

Resource Settings .................................................................................................................................. 3-23

JDBC Connection Pool Settings..................................................................................................... 3-23

Connector Connection Pool Settings............................................................................................ 3-26

Load Balancer Settings ......................................................................................................................... 3-26

v

4 Tuning the Java Runtime System

Java Virtual Machine Settings ............................................................................................................... 4-1

Start Options ............................................................................................................................................. 4-2

Tuning High Availability Persistence .................................................................................................. 4-2

Managing Memory and Garbage Collection ...................................................................................... 4-2

Tuning the Garbage Collector.......................................................................................................... 4-2

Tracing Garbage Collection .............................................................................................................. 4-3

Other Garbage Collector Settings .................................................................................................... 4-4

Tuning the Java Heap........................................................................................................................ 4-5

Rebasing DLLs on Windows............................................................................................................ 4-7

Further Information ................................................................................................................................. 4-8

5 Tuning the Operating System and Platform

Server Scaling............................................................................................................................................ 5-1

Processors............................................................................................................................................ 5-1

Memory ............................................................................................................................................... 5-1

Disk Space ........................................................................................................................................... 5-2

Networking......................................................................................................................................... 5-2

UDP Buffer Sizes ................................................................................................................................ 5-2

Solaris 10 Platform-Specific Tuning Information.............................................................................. 5-4

Tuning for the Solaris OS ....................................................................................................................... 5-4

Tuning Parameters............................................................................................................................. 5-4

File Descriptor Setting ....................................................................................................................... 5-6

Tuning for Solaris on x86 ........................................................................................................................ 5-6

File Descriptors................................................................................................................................... 5-6

IP Stack Settings ................................................................................................................................. 5-6

Tuning for Linux platforms .................................................................................................................... 5-7

Startup Files ........................................................................................................................................ 5-7

File Descriptors................................................................................................................................... 5-8

Virtual Memory.................................................................................................................................. 5-9

Network Interface .............................................................................................................................. 5-9

Disk I/O Settings................................................................................................................................ 5-9

TCP/IP Settings............................................................................................................................... 5-10

Tuning UltraSPARC CMT-Based Systems........................................................................................ 5-11

Tuning Operating System and TCP Settings .............................................................................. 5-11

Disk Configuration ......................................................................................................................... 5-11

Network Configuration.................................................................................................................. 5-12

vi

List of Examples

4–1 Heap Configuration on Solaris ................................................................................................. 4-7

4–2 Heap Configuration on Windows ............................................................................................ 4-8

5–1 Setting the UDP Buffer Size in the

/etc/sysctl.conf

File .................................................. 5-4

5–2 Setting the UDP Buffer Size at Runtime.................................................................................. 5-4

viii

List of Tables

1–1 Performance Tuning Roadmap................................................................................................ 1-1

1–2 Factors That Affect Performance ............................................................................................. 1-7

3–1 Bean Type Pooling or Caching................................................................................................. 3-5

3–2 Tunable ORB Parameters....................................................................................................... 3-20

3–3 Connection Pool Sizing .......................................................................................................... 3-24

4–1 Maximum Address Space Per Process.................................................................................... 4-5

5–1 Tuning Parameters for Solaris.................................................................................................. 5-5

5–2 Tuning 64-bit Systems for Performance Benchmarking.................................................... 5-11

ix

Preface

The Performance Tuning Guide describes how to get the best performance with

GlassFish Server 4.0.

This preface contains information about and conventions for the entire GlassFish

Server Open Source Edition (GlassFish Server) documentation set.

GlassFish Server 4.0 is developed through the GlassFish project open-source

community at http://glassfish.java.net/. The GlassFish project provides a

structured process for developing the GlassFish Server platform that makes the new

features of the Java EE platform available faster, while maintaining the most important

feature of Java EE: compatibility. It enables Java developers to access the GlassFish

Server source code and to contribute to the development of the GlassFish Server. The

GlassFish project is designed to encourage communication between Oracle engineers

and the community.

Oracle GlassFish Server Documentation Set

Book Title Description

Release Notes Provides late-breaking information about the software and the

documentation and includes a comprehensive, table-based

summary of the supported hardware, operating system, Java

Development Kit (JDK), and database drivers.

Quick Start Guide Explains how to get started with the GlassFish Server product.

Installation Guide Explains how to install the software and its components.

Upgrade Guide Explains how to upgrade to the latest version of GlassFish Server.

This guide also describes differences between adjacent product

releases and configuration options that can result in

incompatibility with the product specifications.

Deployment Planning Guide Explains how to build a production deployment of GlassFish

Server that meets the requirements of your system and enterprise.

Administration Guide Explains how to configure, monitor, and manage GlassFish Server

subsystems and components from the command line by using the

asadmin

utility. Instructions for performing these tasks from the

Administration Console are provided in the Administration

Console online help.

Security Guide Provides instructions for configuring and administering GlassFish

Server security.

Application Deployment

Guide

Explains how to assemble and deploy applications to the

GlassFish Server and provides information about deployment

descriptors.

x

Typographic Conventions

The following table describes the typographic changes that are used in this book.

Application Development

Guide

Explains how to create and implement Java Platform, Enterprise

Edition (Java EE platform) applications that are intended to run

on the GlassFish Server. These applications follow the open Java

standards model for Java EE components and application

programmer interfaces (APIs). This guide provides information

about developer tools, security, and debugging.

Embedded Server Guide Explains how to run applications in embedded GlassFish Server

and to develop applications in which GlassFish Server is

embedded.

High Availability

Administration Guide

Explains how to configure GlassFish Server to provide higher

availability and scalability through failover and load balancing.

Performance Tuning Guide Explains how to optimize the performance of GlassFish Server.

Troubleshooting Guide Describes common problems that you might encounter when

using GlassFish Server and explains how to solve them.

Error Message Reference Describes error messages that you might encounter when using

GlassFish Server.

Reference Manual Provides reference information in man page format for GlassFish

Server administration commands, utility commands, and related

concepts.

Message Queue Release

Notes

Describes new features, compatibility issues, and existing bugs for

Open Message Queue.

Message Queue Technical

Overview

Provides an introduction to the technology, concepts, architecture,

capabilities, and features of the Message Queue messaging

service.

Message Queue

Administration Guide

Explains how to set up and manage a Message Queue messaging

system.

Message Queue Developer's

Guide for JMX Clients

Describes the application programming interface in Message

Queue for programmatically configuring and monitoring

Message Queue resources in conformance with the Java

Management Extensions (JMX).

Message Queue Developer's

Guide for Java Clients

Provides information about concepts and procedures for

developing Java messaging applications (Java clients) that work

with GlassFish Server.

Message Queue Developer's

Guide for C Clients

Provides programming and reference information for developers

working with Message Queue who want to use the C language

binding to the Message Queue messaging service to send, receive,

and process Message Queue messages.

Typeface Meaning Example

AaBbCc123

The names of commands, files,

and directories, and onscreen

computer output

Edit your

.login

file.

Use

ls a

to list all files.

machine_name% you have mail.

AaBbCc123 What you type, contrasted with

onscreen computer output

machine_name%

su

Password:

Book Title Description

xi

Symbol Conventions

The following table explains symbols that might be used in this book.

Default Paths and File Names

The following table describes the default paths and file names that are used in this

book.

AaBbCc123 A placeholder to be replaced with

a real name or value The command to remove a file is

rm

filename.

AaBbCc123 Book titles, new terms, and terms

to be emphasized (note that some

emphasized items appear bold

online)

Read Chapter 6 in the User's Guide.

A cache is a copy that is stored locally.

Do not save the file.

Symbol Description Example Meaning

[ ]

Contains optional

arguments and

command options.

ls [-l]

The

-l

option is not required.

{ | }

Contains a set of

choices for a required

command option.

-d {y|n}

The

-d

option requires that you

use either the

y

argument or the

n

argument.

${ }

Indicates a variable

reference.

${com.sun.javaRoot}

References the value of the

com.sun.javaRoot

variable.

- Joins simultaneous

multiple keystrokes. Control-A Press the Control key while you

press the A key.

+ Joins consecutive

multiple keystrokes. Ctrl+A+N Press the Control key, release it,

and then press the subsequent

keys.

> Indicates menu item

selection in a graphical

user interface.

File > New > Templates From the File menu, choose

New. From the New submenu,

choose Templates.

Placeholder Description Default Value

as-install Represents the base installation

directory for GlassFish Server.

In configuration files, as-install is

represented as follows:

${com.sun.aas.installRoot}

Installations on the Oracle Solaris operating system, Linux

operating system, and Mac OS operating system:

user's-home-directory

/glassfish3/glassfish

Installations on the Windows operating system:

SystemDrive

:\glassfish3\glassfish

as-install-parent Represents the parent of the base

installation directory for GlassFish

Server.

Installations on the Oracle Solaris operating system, Linux

operating system, and Mac operating system:

user's-home-directory

/glassfish3

Installations on the Windows operating system:

SystemDrive

:\glassfish3

domain-root-dir Represents the directory in which a

domain is created by default. as-install

/domains/

Typeface Meaning Example

xii

Documentation, Support, and Training

The Oracle web site provides information about the following additional resources:

■Documentation (http://docs.oracle.com/)

■Support (http://www.oracle.com/us/support/index.html)

■Training (http://education.oracle.com/)

Documentation Accessibility

For information about Oracle's commitment to accessibility, visit the Oracle

Accessibility Program website at

http://www.oracle.com/pls/topic/lookup?ctx=acc&id=docacc.

Access to Oracle Support

Oracle customers have access to electronic support through My Oracle Support. For

information, visit

http://www.oracle.com/pls/topic/lookup?ctx=acc&id=info or visit

http://www.oracle.com/pls/topic/lookup?ctx=acc&id=trs if you are

hearing impaired.

domain-dir Represents the directory in which a

domain's configuration is stored.

In configuration files, domain-dir is

represented as follows:

${com.sun.aas.instanceRoot}

domain-root-dir

/

domain-name

instance-dir Represents the directory for a server

instance. domain-dir

/

instance-name

Placeholder Description Default Value

1

Overview of GlassFish Server Performance Tuning 1-1

1Overview of GlassFish Server Performance

Tuning

You can significantly improve performance of the Oracle GlassFish Server and of

applications deployed to it by adjusting a few deployment and server configuration

settings. However, it is important to understand the environment and performance

goals. An optimal configuration for a production environment might not be optimal

for a development environment.

The following topics are addressed here:

■Process Overview

■Understanding Operational Requirements

■General Tuning Concepts

■Further Information

Process Overview

The following table outlines the overall GlassFish Server 4.0 administration process,

and shows where performance tuning fits in the sequence.

Table 1–1 Performance Tuning Roadmap

Step Description of Task Location of Instructions

1Design: Decide on the high-availability topology

and set up GlassFish Server. GlassFish Server Open Source Edition Deployment Planning

Guide

2Capacity Planning: Make sure the systems have

sufficient resources to perform well. GlassFish Server Open Source Edition Deployment Planning

Guide

3Installation: Configure your DAS, clusters, and

clustered server instances. GlassFish Server Open Source Edition Installation Guide

Understanding Operational Requirements

1-2 GlassFish Server Open Source Edition 4.0 Performance Tuning Guide

Performance Tuning Sequence

Application developers should tune applications prior to production use. Tuning

applications often produces dramatic performance improvements. System

administrators perform the remaining steps in the following list after tuning the

application, or when application tuning has to wait and you want to improve

performance as much as possible in the meantime.

Ideally, follow this sequence of steps when you are tuning performance:

1. Tune your application, described in Tuning Your Application.

2. Tune the server, described in Tuning the GlassFish Server.

3. Tune the Java runtime system, described in Tuning the Java Runtime System.

4. Tune the operating system, described in Tuning the Operating System and

Platform.

Understanding Operational Requirements

Before you begin to deploy and tune your application on the GlassFish Server, it is

important to clearly define the operational environment. The operational environment

is determined by high-level constraints and requirements such as:

■Application Architecture

■Security Requirements

■High Availability Clustering, Load Balancing, and Failover

■Hardware Resources

■Administration

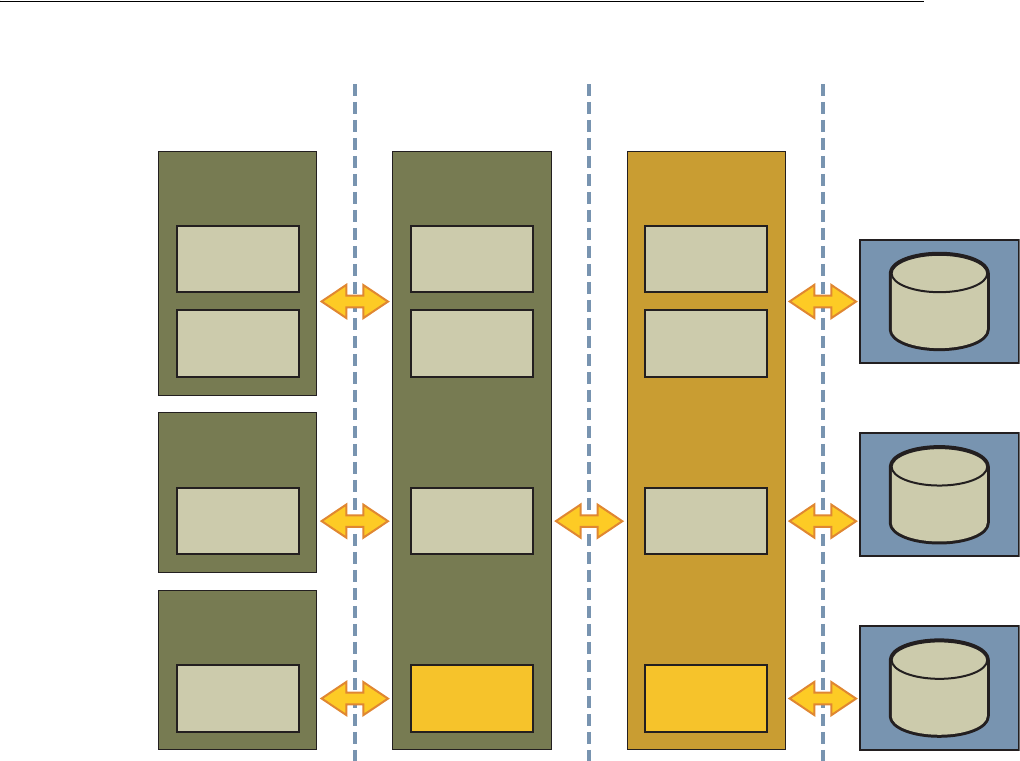

Application Architecture

The Java EE Application model, as shown in the following figure, is very flexible;

allowing the application architect to split application logic functionally into many

tiers. The presentation layer is typically implemented using servlets and JSP

technology and executes in the web container.

4Deployment: Install and run your applications.

Familiarize yourself with how to configure and

administer the GlassFish Server.

The following books:

■GlassFish Server Open Source Edition Application

Deployment Guide

■GlassFish Server Open Source Edition Administration

Guide

5High Availability Configuration: Configuring

your DAS, clusters, and clustered server

instances for high availability and failover

GlassFish Server Open Source Edition High Availability

Administration Guide

6Performance Tuning: Tune the following items:

■Applications

■GlassFish Server

■Java Runtime System

■Operating system and platform

The following chapters:

■Tuning Your Application

■Tuning the GlassFish Server

■Tuning the Java Runtime System

■Tuning the Operating System and Platform

Table 1–1 (Cont.) Performance Tuning Roadmap

Step Description of Task Location of Instructions

Understanding Operational Requirements

Overview of GlassFish Server Performance Tuning 1-3

Figure 1–1 Java EE Application Model

Moderately complex enterprise applications can be developed entirely using servlets

and JSP technology. More complex business applications often use Enterprise

JavaBeans (EJB) components. The GlassFish Server integrates the Web and EJB

containers in a single process. Local access to EJB components from servlets is very

efficient. However, some application deployments may require EJB components to

execute in a separate process; and be accessible from standalone client applications as

well as servlets. Based on the application architecture, the server administrator can

employ the GlassFish Server in multiple tiers, or simply host both the presentation and

business logic on a single tier.

It is important to understand the application architecture before designing a new

GlassFish Server deployment, and when deploying a new business application to an

existing application server deployment.

Security Requirements

Most business applications require security. This section discusses security

considerations and decisions.

User Authentication and Authorization

Application users must be authenticated. The GlassFish Server provides a number of

choices for user authentication, including file-based, administration, LDAP, certificate,

JDBC, digest, PAM, Solaris, and custom realms.

EJB

Pure

HTML

Browser

Java

Applet

Java

Application

Desktop

J2EE

Client

J2EE

Platform

Other

Device

Client-Side

Presentation

JSP

Web

Server

JSP

Java

Servlet

Server-Side

Presentation

J2EE

Platform

EJB

EJB

Container

EJB

Server-Side

Business Logic

Enterprise

Information

System

Understanding Operational Requirements

1-4 GlassFish Server Open Source Edition 4.0 Performance Tuning Guide

The default file based security realm is suitable for developer environments, where

new applications are developed and tested. At deployment time, the server

administrator can choose between the Lighweight Directory Access Protocol (LDAP)

or Solaris security realms. Many large enterprises use LDAP-based directory servers to

maintain employee and customer profiles. Small to medium enterprises that do not

already use a directory server may find it advantageous to leverage investment in

Solaris security infrastructure.

For more information on security realms, see "Administering Authentication Realms"

in GlassFish Server Open Source Edition Security Guide.

The type of authentication mechanism chosen may require additional hardware for the

deployment. Typically a directory server executes on a separate server, and may also

require a backup for replication and high availability. Refer to the Oracle Java System

Directory Server

(http://www.oracle.com/us/products/middleware/identity-managemen

t/oracle-directory-services/index.html) documentation for more

information on deployment, sizing, and availability guidelines.

An authenticated user's access to application functions may also need authorization

checks. If the application uses the role-based Java EE authorization checks, the

application server performs some additional checking, which incurs additional

overheads. When you perform capacity planning, you must take this additional

overhead into account.

Encryption

For security reasons, sensitive user inputs and application output must be encrypted.

Most business-oriented web applications encrypt all or some of the communication

flow between the browser and GlassFish Server. Online shopping applications encrypt

traffic when the user is completing a purchase or supplying private data. Portal

applications such as news and media typically do not employ encryption. Secure

Sockets Layer (SSL) is the most common security framework, and is supported by

many browsers and application servers.

The GlassFish Server supports SSL 2.0 and 3.0 and contains software support for

various cipher suites. It also supports integration of hardware encryption cards for

even higher performance. Security considerations, particularly when using the

integrated software encryption, will impact hardware sizing and capacity planning.

Consider the following when assessing the encryption needs for a deployment:

■What is the nature of the applications with respect to security? Do they encrypt all

or only a part of the application inputs and output? What percentage of the

information needs to be securely transmitted?

■Are the applications going to be deployed on an application server that is directly

connected to the Internet? Will a web server exist in a demilitarized zone (DMZ)

separate from the application server tier and backend enterprise systems?

A DMZ-style deployment is recommended for high security. It is also useful when

the application has a significant amount of static text and image content and some

business logic that executes on the GlassFish Server, behind the most secure

firewall. GlassFish Server provides secure reverse proxy plugins to enable

integration with popular web servers. The GlassFish Server can also be deployed

and used as a web server in DMZ.

■Is encryption required between the web servers in the DMZ and application

servers in the next tier? The reverse proxy plugins supplied with GlassFish Server

support SSL encryption between the web server and application server tier. If SSL

Understanding Operational Requirements

Overview of GlassFish Server Performance Tuning 1-5

is enabled, hardware capacity planning must be take into account the encryption

policy and mechanisms.

■If software encryption is to be employed:

–What is the expected performance overhead for every tier in the system, given

the security requirements?

–What are the performance and throughput characteristics of various choices?

For information on how to encrypt the communication between web servers and

GlassFish Server, see "Administering Message Security" in GlassFish Server Open Source

Edition Security Guide.

High Availability Clustering, Load Balancing, and Failover

GlassFish Server 4.0 enables multiple GlassFish Server instances to be clustered to

provide high availability through failure protection, scalability, and load balancing.

High availability applications and services provide their functionality continuously,

regardless of hardware and software failures. To make such reliability possible,

GlassFish Server 4.0 provides mechanisms for maintaining application state data

between clustered GlassFish Server instances. Application state data, such as HTTP

session data, stateful EJB sessions, and dynamic cache information, is replicated in real

time across server instances. If any one server instance goes down, the session state is

available to the next failover server, resulting in minimum application downtime and

enhanced transactional security.

GlassFish Server provides the following high availability features:

■High Availability Session Persistence

■High Availability Java Message Service

■RMI-IIOP Load Balancing and Failover

See Tuning High Availability Persistence for high availability persistence tuning

recommendations.

See the GlassFish Server Open Source Edition High Availability Administration Guide for

complete information about configuring high availability clustering, load balancing,

and failover features in GlassFish Server 4.0.

Hardware Resources

The type and quantity of hardware resources available greatly influence performance

tuning and site planning.

GlassFish Server provides excellent vertical scalability. It can scale to efficiently utilize

multiple high-performance CPUs, using just one application server process. A smaller

number of application server instances makes maintenance easier and administration

less expensive. Also, deploying several related applications on fewer application

servers can improve performance, due to better data locality, and reuse of cached data

between co-located applications. Such servers must also contain large amounts of

memory, disk space, and network capacity to cope with increased load.

GlassFish Server can also be deployed on large "farms" of relatively modest hardware

units. Business applications can be partitioned across various server instances. Using

one or more external load balancers can efficiently spread user access across all the

application server instances. A horizontal scaling approach may improve availability,

lower hardware costs and is suitable for some types of applications. However, this

General Tuning Concepts

1-6 GlassFish Server Open Source Edition 4.0 Performance Tuning Guide

approach requires administration of more application server instances and hardware

nodes.

Administration

A single GlassFish Server installation on a server can encompass multiple instances. A

group of one or more instances that are administered by a single Administration

Server is called a domain. Grouping server instances into domains permits different

people to independently administer the groups.

You can use a single-instance domain to create a "sandbox" for a particular developer

and environment. In this scenario, each developer administers his or her own

application server, without interfering with other application server domains. A small

development group may choose to create multiple instances in a shared administrative

domain for collaborative development.

In a deployment environment, an administrator can create domains based on

application and business function. For example, internal Human Resources

applications may be hosted on one or more servers in one Administrative domain,

while external customer applications are hosted on several administrative domains in

a server farm.

GlassFish Server supports virtual server capability for web applications. For example,

a web application hosting service provider can host different URL domains on a single

GlassFish Server process for efficient administration.

For detailed information on administration, see the GlassFish Server Open Source Edition

Administration Guide.

General Tuning Concepts

Some key concepts that affect performance tuning are:

■User load

■Application scalability

■Margins of safety

The following table describes these concepts, and how they are measured in practice.

The left most column describes the general concept, the second column gives the

practical ramifications of the concept, the third column describes the measurements,

and the right most column describes the value sources.

General Tuning Concepts

Overview of GlassFish Server Performance Tuning 1-7

Capacity Planning

The previous discussion guides you towards defining a deployment architecture.

However, you determine the actual size of the deployment by a process called capacity

planning. Capacity planning enables you to predict:

■The performance capacity of a particular hardware configuration.

■The hardware resources required to sustain specified application load and

performance.

You can estimate these values through careful performance benchmarking, using an

application with realistic data sets and workloads.

To Determine Capacity

1. Determine performance on a single CPU.

First determine the largest load that a single processor can sustain. You can obtain

this figure by measuring the performance of the application on a single-processor

machine. Either leverage the performance numbers of an existing application with

similar processing characteristics or, ideally, use the actual application and

workload in a testing environment. Make sure that the application and data

resources are tiered exactly as they would be in the final deployment.

Table 1–2 Factors That Affect Performance

Concept In practice Measurement Value sources

User Load Concurrent

sessions at

peak load

Transactions Per Minute

(TPM)

Web Interactions Per

Second (WIPS)

(Max. number of concurrent users) * (expected

response time) / (time between clicks)

Example:

(100 users * 2 sec) / 10 sec = 20

Application

Scalability Transaction

rate

measured on

one CPU

TPM or WIPS Measured from workload benchmark. Perform at

each tier.

Vertical

scalability Increase in

performance

from

additional

CPUs

Percentage gain per

additional CPU Based on curve fitting from benchmark. Perform

tests while gradually increasing the number of

CPUs. Identify the "knee" of the curve, where

additional CPUs are providing uneconomical gains

in performance. Requires tuning as described in this

guide. Perform at each tier and iterate if necessary.

Stop here if this meets performance requirements.

Horizontal

scalability Increase in

performance

from

additional

servers

Percentage gain per

additional server process

and/or hardware node.

Use a well-tuned single application server instance,

as in previous step. Measure how much each

additional server instance and hardware node

improves performance.

Safety

Margins High

availability

requirements

If the system must cope

with failures, size the

system to meet performance

requirements assuming that

one or more application

server instances are non

functional

Different equations used if high availability is

required.

Excess

capacity for

unexpected

peaks

It is desirable to operate a

server at less than its

benchmarked peak, for

some safety margin

80% system capacity utilization at peak loads may

work for most installations. Measure your

deployment under real and simulated peak loads.

Further Information

1-8 GlassFish Server Open Source Edition 4.0 Performance Tuning Guide

2. Determine vertical scalability.

Determine how much additional performance you gain when you add processors.

That is, you are indirectly measuring the amount of shared resource contention

that occurs on the server for a specific workload. Either obtain this information

based on additional load testing of the application on a multiprocessor system, or

leverage existing information from a similar application that has already been load

tested.

Running a series of performance tests on one to eight CPUs, in incremental steps,

generally provides a sense of the vertical scalability characteristics of the system.

Be sure to properly tune the application, GlassFish Server, backend database

resources, and operating system so that they do not skew the results.

3. Determine horizontal scalability.

If sufficiently powerful hardware resources are available, a single hardware node

may meet the performance requirements. However for better availability, you can

cluster two or more systems. Employing external load balancers and workload

simulation, determine the performance benefits of replicating one well-tuned

application server node, as determined in step 2.

User Expectations

Application end-users generally have some performance expectations. Often you can

numerically quantify them. To ensure that customer needs are met, you must

understand these expectations clearly, and use them in capacity planning.

Consider the following questions regarding performance expectations:

■What do users expect the average response times to be for various interactions

with the application? What are the most frequent interactions? Are there any

extremely time-critical interactions? What is the length of each transaction,

including think time? In many cases, you may need to perform empirical user

studies to get good estimates.

■What are the anticipated steady-state and peak user loads? Are there are any

particular times of the day, week, or year when you observe or expect to observe

load peaks? While there may be several million registered customers for an online

business, at any one time only a fraction of them are logged in and performing

business transactions. A common mistake during capacity planning is to use the

total size of customer population as the basis and not the average and peak

numbers for concurrent users. The number of concurrent users also may exhibit

patterns over time.

■What is the average and peak amount of data transferred per request? This value

is also application-specific. Good estimates for content size, combined with other

usage patterns, will help you anticipate network capacity needs.

■What is the expected growth in user load over the next year? Planning ahead for

the future will help avoid crisis situations and system downtimes for upgrades.

Further Information

■For more information on Java performance, see Java Performance Documentation

(http://java.sun.com/docs/performance) and Java Performance

BluePrints

(http://java.sun.com/blueprints/performance/index.html).

Further Information

Overview of GlassFish Server Performance Tuning 1-9

■For more information about performance tuning for high availability

configurations, see the GlassFish Server Open Source Edition High Availability

Administration Guide.

■For complete information about using the Performance Tuning features available

through the GlassFish Server Administration Console, refer to the Administration

Console online help.

■For details on optimizing EJB components, see Seven Rules for Optimizing Entity

Beans

(http://java.sun.com/developer/technicalArticles/ebeans/seven

rules/)

■For details on profiling, see "Profiling Tools" in GlassFish Server Open Source Edition

Application Development Guide.

■To view a demonstration video showing how to use the GlassFish Server

Performance Tuner, see the Oracle GlassFish Server 3.1 - Performance Tuner demo

(http://www.youtube.com/watch?v=FavsE2pzAjc).

■To find additional Performance Tuning development information, see the

Performance Tuner in Oracle GlassFish Server 3.1

(http://blogs.oracle.com/jenblog/entry/performance_tuner_in_

oracle_glassfish) blog.

Further Information

1-10 GlassFish Server Open Source Edition 4.0 Performance Tuning Guide

2

Tuning Your Application 2-1

2Tuning Your Application

This chapter provides information on tuning applications for maximum performance.

A complete guide to writing high performance Java and Java EE applications is

beyond the scope of this document.

The following topics are addressed here:

■Java Programming Guidelines

■Java Server Page and Servlet Tuning

■EJB Performance Tuning

Java Programming Guidelines

This section covers issues related to Java coding and performance. The guidelines

outlined are not specific to GlassFish Server, but are general rules that are useful in

many situations. For a complete discussion of Java coding best practices, see the Java

Blueprints

(http://www.oracle.com/technetwork/java/javaee/blueprints/index.

html).

The following topics are addressed here:

■Avoid Serialization and Deserialization

■Use

StringBuilder

to Concatenate Strings

■Assign null to Variables That Are No Longer Needed

■Declare Methods as final Only If Necessary

■Declare Constants as static final

■Avoid Finalizers

■Declare Method Arguments final

■Synchronize Only When Necessary

■Use DataHandlers for SOAP Attachments

Avoid Serialization and Deserialization

Serialization and deserialization of objects is a CPU-intensive procedure and is likely

to slow down your application. Use the

transient

keyword to reduce the amount of

data serialized. Additionally, customized

readObject()

and

writeObject()

methods

may be beneficial in some cases.

Java Programming Guidelines

2-2 GlassFish Server Open Source Edition 4.0 Performance Tuning Guide

Use

StringBuilder

to Concatenate Strings

To improve performance, instead of using string concatenation, use

StringBuilder.append()

.

String objects are immutable - that is, they never change after creation. For example,

consider the following code:

String str = "testing";

str = str + "abc";

str = str + "def";

The compiler translates this code as:

String str = "testing";

StringBuilder tmp = new StringBuilder(str);

tmp.append("abc");

str = tmp.toString();

StringBulder tmp = new StringBuilder(str);

tmp.append("def");

str = tmp.toString();

This copying is inherently expensive and overusing it can reduce performance

significantly. You are far better off writing:

StringBuilder tmp = new StringBuilder("testing");

tmp.append("abc");

tmp.append("def");

String str = tmp.toString();

Assign null to Variables That Are No Longer Needed

Explicitly assigning a null value to variables that are no longer needed helps the

garbage collector to identify the parts of memory that can be safely reclaimed.

Although Java provides memory management, it does not prevent memory leaks or

using excessive amounts of memory.

An application may induce memory leaks by not releasing object references. Doing so

prevents the Java garbage collector from reclaiming those objects, and results in

increasing amounts of memory being used. Explicitly nullifying references to variables

after their use allows the garbage collector to reclaim memory.

One way to detect memory leaks is to employ profiling tools and take memory

snapshots after each transaction. A leak-free application in steady state will show a

steady active heap memory after garbage collections.

Declare Methods as final Only If Necessary

Modern optimizing dynamic compilers can perform inlining and other

inter-procedural optimizations, even if Java methods are not declared

final

. Use the

keyword

final

as it was originally intended: for program architecture reasons and

maintainability.

Only if you are absolutely certain that a method must not be overridden, use the

final

keyword.

Declare Constants as static final

The dynamic compiler can perform some constant folding optimizations easily, when

you declare constants as

static final

variables.

Java Server Page and Servlet Tuning

Tuning Your Application 2-3

Avoid Finalizers

Adding finalizers to code makes the garbage collector more expensive and

unpredictable. The virtual machine does not guarantee the time at which finalizers are

run. Finalizers may not always be executed, before the program exits. Releasing

critical resources in

finalize()

methods may lead to unpredictable application

behavior.

Declare Method Arguments final

Declare method arguments

final

if they are not modified in the method. In general,

declare all variables

final

if they are not modified after being initialized or set to some

value.

Synchronize Only When Necessary

Do not synchronize code blocks or methods unless synchronization is required. Keep

synchronized blocks or methods as short as possible to avoid scalability bottlenecks.

Use the Java Collections Framework for unsynchronized data structures instead of

more expensive alternatives such as

java.util.HashTable

.

Use DataHandlers for SOAP Attachments

Using a

javax.activation.DataHandler

for a SOAP attachment will improve

performance.

JAX-RPC specifies:

■A mapping of certain MIME types to Java types.

■Any MIME type is mappable to a

javax.activation.DataHandler

.

As a result, send an attachment (

.gif

or XML document) as a SOAP attachment to an

RPC style web service by utilizing the Java type mappings. When passing in any of the

mandated Java type mappings (appropriate for the attachment's MIME type) as an

argument for the web service, the JAX-RPC runtime handles these as SOAP

attachments.

For example, to send out an

image/gif

attachment, use

java.awt.Image

, or create a

DataHandler

wrapper over your image. The advantages of using the wrapper are:

■Reduced coding: You can reuse generic attachment code to handle the attachments

because the

DataHandler

determines the content type of the contained data

automatically. This feature is especially useful when using a document style

service. Since the content is known at runtime, there is no need to make calls to

attachment.setContent(stringContent, "image/gif")

, for example.

■Improved Performance: Informal tests have shown that using

DataHandler

wrappers doubles throughput for

image/gif

MIME types, and multiplies

throughput by approximately 1.5 for

text/xml

or

java.awt.Image

for

image/*

types.

Java Server Page and Servlet Tuning

Many applications running on the GlassFish Server use servlets or JavaServer Pages

(JSP) technology in the presentation tier. This section describes how to improve

performance of such applications, both through coding practices and through

deployment and configuration settings.

Java Server Page and Servlet Tuning

2-4 GlassFish Server Open Source Edition 4.0 Performance Tuning Guide

Suggested Coding Practices

This section provides some tips on coding practices that improve servlet and JSP

application performance.

The following topics are addressed here:

■General Guidelines

■Avoid Shared Modified Class Variables

■HTTP Session Handling

■Configuration and Deployment Tips

General Guidelines

Follow these general guidelines to increase performance of the presentation tier:

■Minimize Java synchronization in servlets.

■Do not use the single thread model for servlets.

■Use the servlet's

init()

method to perform expensive one-time initialization.

■Avoid using

System.out.println()

calls.

Avoid Shared Modified Class Variables

In the servlet multithread model (the default), a single instance of a servlet is created

for each application server instance. All requests for a servlet on that application

instance share the same servlet instance. This can lead to thread contention if there are

synchronization blocks in the servlet code. Therefore, avoid using shared modified

class variables because they create the need for synchronization.

HTTP Session Handling

Follow these guidelines when using HTTP sessions:

■Create sessions sparingly. Session creation is not free. If a session is not required,

do not create one.

■Use

javax.servlet.http.HttpSession.invalidate()

to release sessions when

they are no longer needed.

■Keep session size small, to reduce response times. If possible, keep session size

below 7 kilobytes.

■Use the directive

<%page session="false"%>

in JSP files to prevent the GlassFish

Server from automatically creating sessions when they are not necessary.

■Avoid large object graphs in an

HttpSession

. They force serialization and add

computational overhead. Generally, do not store large objects as

HttpSession

variables.

■Do not cache transaction data in an

HttpSession

. Access to data in an

HttpSession

is not transactional. Do not use it as a cache of transactional data,

which is better kept in the database and accessed using entity beans. Transactions

will rollback upon failures to their original state. However, stale and inaccurate

data may remain in

HttpSession

objects. GlassFish Server provides "read-only"

bean-managed persistence entity beans for cached access to read-only data.

EJB Performance Tuning

Tuning Your Application 2-5

Configuration and Deployment Tips

Follow these configuration tips to improve performance. These tips are intended for

production environments, not development environments.

■To improve class loading time, avoid having excessive directories in the server

CLASSPATH

. Put application-related classes into JAR files.

■HTTP response times are dependent on how the keep-alive subsystem and the

HTTP server is tuned in general. For more information, see HTTP Service Settings.

■Cache servlet results when possible. For more information, see "Developing Web

Applications" in GlassFish Server Open Source Edition Application Development Guide.

■If an application does not contain any EJB components, deploy the application as a

WAR file, not an EAR file.

Optimize SSL Optimize SSL by using routines in the appropriate operating system

library for concurrent access to heap space. The library to use depends on the version

of the Solaris Operating System (SolarisOS) that you are using. To ensure that you use

the correct library, set the

LD_PRELOAD

environment variable to specify the correct

library file. For more information, refer to the following table.

To set the

LD_PRELOAD

environment variable, edit the entry for this environment

variable in the

startserv

script. The

startserv

script is located is located in the

bin/startserv

directory of your domain.

The exact syntax to define an environment variable depends on the shell that you are

using.

Disable Security Manager The security manager is expensive because calls to required

resources must call the

doPrivileged()

method and must also check the resource with

the

server.policy

file. If you are sure that no malicious code will be run on the server

and you do not use authentication within your application, then you can disable the

security manager.

See "Enabling and Disabling the Security Manager" in GlassFish Server Open Source

Edition Application Development Guide for instructions on enabling or disabling the

security manager. If using the GlassFish Server Administration Console, navigate to

the Configurations>configuration-name>Security node and check or uncheck the

Security Manager option as desired. Refer to the Administration Console online help

for more information.

EJB Performance Tuning

The GlassFish Server's high-performance EJB container has numerous parameters that

affect performance. Individual EJB components also have parameters that affect

performance. The value of individual EJB component's parameter overrides the value

of the same parameter for the EJB container. The default values are designed for a

single-processor computer system. Modify these values as appropriate to optimize for

other system configurations.

The following topics are addressed here:

Solaris OS Version Library Setting of

LD_PRELOAD

Environment Variable

10

libumem3LIB /usr/lib/libumem.so

9

libmtmalloc3LIB /usr/lib/libmtmalloc.so

EJB Performance Tuning

2-6 GlassFish Server Open Source Edition 4.0 Performance Tuning Guide

■Goals

■Monitoring EJB Components

■General Guidelines

■Using Local and Remote Interfaces

■Improving Performance of EJB Transactions

■Using Special Techniques

■Tuning Tips for Specific Types of EJB Components

■JDBC and Database Access

■Tuning Message-Driven Beans

Goals

The goals of EJB performance tuning are:

■Increased speed: Cache as many beans in the EJB caches as possible to increase

speed (equivalently, decrease response time). Caching eliminates CPU-intensive

operations. However, since memory is finite, as the caches become larger,

housekeeping for them (including garbage collection) takes longer.

■Decreased memory consumption: Beans in the pools or caches consume memory

from the Java virtual machine heap. Very large pools and caches degrade

performance because they require longer and more frequent garbage collection

cycles.

■Improved functional properties: Functional properties such as user timeout,

commit options, security, and transaction options, are mostly related to the

functionality and configuration of the application. Generally, they do not

compromise functionality for performance. In some cases, you might be forced to

make a "trade-off" decision between functionality and performance. This section

offers suggestions in such cases.

Monitoring EJB Components

When the EJB container has monitoring enabled, you can examine statistics for

individual beans based on the bean pool and cache settings.

For example, the monitoring command below returns the Bean Cache statistics for a

stateful session bean.

asadmin get --user admin --host e4800-241-a --port 4848

-m specjcmp.application.SPECjAppServer.ejb-module.

supplier_jar.stateful-session-bean.BuyerSes.bean-cache.*

The following is a sample of the monitoring output:

resize-quantity = -1

cache-misses = 0

idle-timeout-in-seconds = 0

num-passivations = 0

cache-hits = 59

num-passivation-errors = 0

total-beans-in-cache = 59

num-expired-sessions-removed = 0

max-beans-in-cache = 4096

num-passivation-success = 0

EJB Performance Tuning

Tuning Your Application 2-7

The monitoring command below gives the bean pool statistics for an entity bean:

asadmin get --user admin --host e4800-241-a --port 4848

-m specjcmp.application.SPECjAppServer.ejb-module.

supplier_jar.stateful-entity-bean.ItemEnt.bean-pool.*

idle-timeout-in-seconds = 0

steady-pool-size = 0

total-beans-destroyed = 0

num-threads-waiting = 0

num-beans-in-pool = 54

max-pool-size = 2147483647

pool-resize-quantity = 0

total-beans-created = 255

The monitoring command below gives the bean pool statistics for a stateless bean.

asadmin get --user admin --host e4800-241-a --port 4848

-m

test.application.testEjbMon.ejb-module.slsb.stateless-session-bean.slsb.bean-pool.

*

idle-timeout-in-seconds = 200

steady-pool-size = 32

total-beans-destroyed = 12

num-threads-waiting = 0

num-beans-in-pool = 4

max-pool-size = 1024

pool-resize-quantity = 12

total-beans-created = 42

Tuning the bean involves charting the behavior of the cache and pool for the bean in

question over a period of time.

If too many passivations are happening and the JVM heap remains fairly small, then

the

max-cache-size

or the

cache-idle-timeout-in-seconds

can be increased. If

garbage collection is happening too frequently, and the pool size is growing, but the

cache hit rate is small, then the

pool-idle-timeout-in-seconds

can be reduced to

destroy the instances.

Monitoring Individual EJB Components

To gather method invocation statistics for all methods in a bean, use the following

command:

asadmin get -m monitorableObject.*

where monitorableObject is a fully-qualified identifier from the hierarchy of objects that

can be monitored, shown below.

serverInstance.application.applicationName.ejb-module.moduleName

where moduleName is

x_jar

for module

x.jar

.

■

.stateless-session-bean.beanName .bean-pool .bean-method.methodName

■

.stateful-session-bean.beanName .bean-cache .bean-method.methodName

Note: Specifying a

max-pool-size

of zero (0) means that the pool

is unbounded. The pooled beans remain in memory unless they are

removed by specifying a small interval for

pool-idle-timeout-in-seconds

. For production systems,

specifying the pool as unbounded is NOT recommended.

EJB Performance Tuning

2-8 GlassFish Server Open Source Edition 4.0 Performance Tuning Guide

■

.entity-bean.beanName .bean-cache .bean-pool .bean-method.methodName

■

.message-driven-bean.beanName .bean-pool .bean-method.methodName

(methodName = onMessage)

For standalone beans, use this pattern:

serverInstance.application.applicationName.standalone-ejb-module.moduleName

The possible identifiers are the same as for

ejb-module

.

For example, to get statistics for a method in an entity bean, use this command:

asadmin get -m serverInstance.application.appName.ejb-module.moduleName

.entity-bean.beanName.bean-method.methodName.*

For more information about administering the monitoring service in general, see

"Administering the Monitoring Service" in GlassFish Server Open Source Edition

Administration Guide. For information about viewing comprehensive EJB monitoring

statistics, see "EJB Statistics" in GlassFish Server Open Source Edition Administration

Guide.

To configure EJB monitoring using the GlassFish Server Administration Console,

navigate to the Configurations>configuration-name>Monitoring node. After

configuring monitoring, you can view monitoring statistics by navigating to the server

(Admin Server) node and then selecting the Monitor tab. Refer to the Administration

Console online help for instructions on each of these procedures.

Alternatively, to list EJB statistics, use the

asadmin list

subcommand. For more

information, see

list

(1).

For statistics on stateful session bean passivations, use this command:

asadmin get -m serverInstance.application.appName.ejb-module.moduleName

.stateful-session-bean.beanName.bean-cache.*

From the attribute values that are returned, use this command:

num-passivationsnum-passivation-errorsnum-passivation-success

General Guidelines

The following guidelines can improve performance of EJB components. Keep in mind

that decomposing an application into many EJB components creates overhead and can

degrade performance. EJB components are not simply Java objects. They are

components with semantics for remote call interfaces, security, and transactions, as

well as properties and methods.

Use High Performance Beans

Use high-performance beans as much as possible to improve the overall performance

of your application. For more information, see Tuning Tips for Specific Types of EJB

Components.

The types of EJB components are listed below, from the highest performance to the

lowest:

1. Stateless Session Beans and Message Driven Beans

2. Stateful Session Beans

3. Container Managed Persistence (CMP) entity beans configured as read-only

4. Bean Managed Persistence (BMP) entity beans configured as read-only

EJB Performance Tuning

Tuning Your Application 2-9

5. CMP beans

6. BMP beans

For more information about configuring high availability session persistence, see

"Configuring High Availability Session Persistence and Failover" in GlassFish Server

Open Source Edition High Availability Administration Guide. To configure EJB beans

using the GlassFish Server Administration Console, navigate to the

Configurations>configuration-name>EJB Container node and then refer to the

Administration Console online help for detailed instructions.

Use Caching

Caching can greatly improve performance when used wisely. For example:

■Cache EJB references: To avoid a JNDI lookup for every request, cache EJB

references in servlets.

■Cache home interfaces: Since repeated lookups to a home interface can be

expensive, cache references to

EJBHomes

in the

init()

methods of servlets.

■Cache EJB resources: Use

setSessionContext()

or

ejbCreate()

to cache bean

resources. This is again an example of using bean lifecycle methods to perform

application actions only once where possible. Remember to release acquired

resources in the

ejbRemove()

method.

Use the Appropriate Stubs

The stub classes needed by EJB applications are generated dynamically at runtime

when an EJB client needs them. This means that it is not necessary to generate the

stubs or retrieve the client JAR file when deploying an application with remote EJB