Programmer's Guide Prog Master

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 407 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Introduction

- Overview

- Environment Abstraction Layer

- Service Cores

- Ring Library

- Mempool Library

- Mbuf Library

- Poll Mode Driver

- Generic flow API (rte_flow)

- Switch Representation within DPDK Applications

- Traffic Metering and Policing API

- Traffic Management API

- Wireless Baseband Device Library

- Cryptography Device Library

- Compression Device Library

- Security Library

- Rawdevice Library

- Link Bonding Poll Mode Driver Library

- Timer Library

- Hash Library

- Elastic Flow Distributor Library

- Membership Library

- LPM Library

- LPM6 Library

- Flow Classification Library

- Packet Distributor Library

- Reorder Library

- IP Fragmentation and Reassembly Library

- Generic Receive Offload Library

- Generic Segmentation Offload Library

- The librte_pdump Library

- Multi-process Support

- Kernel NIC Interface

- Thread Safety of DPDK Functions

- Event Device Library

- Event Ethernet Rx Adapter Library

- Event Ethernet Tx Adapter Library

- Event Timer Adapter Library

- Event Crypto Adapter Library

- Quality of Service (QoS) Framework

- Power Management

- Packet Classification and Access Control

- Packet Framework

- Vhost Library

- Metrics Library

- Berkeley Packet Filter Library

- Source Organization

- Development Kit Build System

- Development Kit Root Makefile Help

- Extending the DPDK

- Building Your Own Application

- External Application/Library Makefile help

- Performance Optimization Guidelines

- Writing Efficient Code

- Profile Your Application

- Glossary

Programmer’s Guide

Release 18.11.0-rc5

November 25, 2018

CONTENTS

1 Introduction 1

1.1 Documentation Roadmap ............................... 1

1.2 Related Publications .................................. 2

2 Overview 3

2.1 Development Environment .............................. 3

2.2 Environment Abstraction Layer ............................ 4

2.3 Core Components ................................... 4

2.4 Ethernet* Poll Mode Driver Architecture ....................... 6

2.5 Packet Forwarding Algorithm Support ........................ 6

2.6 librte_net ........................................ 6

3 Environment Abstraction Layer 7

3.1 EAL in a Linux-userland Execution Environment .................. 7

3.2 Memory Segments and Memory Zones (memzone) ................ 14

3.3 Multiple pthread .................................... 14

3.4 Malloc .......................................... 17

4 Service Cores 22

4.1 Service Core Initialization ............................... 22

4.2 Enabling Services on Cores ............................. 22

4.3 Service Core Statistics ................................ 23

5 Ring Library 24

5.1 References for Ring Implementation in FreeBSD* ................. 25

5.2 Lockless Ring Buffer in Linux* ............................ 25

5.3 Additional Features .................................. 25

5.4 Use Cases ....................................... 25

5.5 Anatomy of a Ring Buffer ............................... 25

5.6 References ....................................... 34

6 Mempool Library 36

6.1 Cookies ......................................... 36

6.2 Stats .......................................... 36

6.3 Memory Alignment Constraints ............................ 36

6.4 Local Cache ...................................... 37

6.5 Mempool Handlers .................................. 38

6.6 Use Cases ....................................... 39

7 Mbuf Library 40

i

7.1 Design of Packet Buffers ............................... 40

7.2 Buffers Stored in Memory Pools ........................... 42

7.3 Constructors ...................................... 42

7.4 Allocating and Freeing mbufs ............................. 42

7.5 Manipulating mbufs .................................. 42

7.6 Meta Information .................................... 42

7.7 Direct and Indirect Buffers .............................. 44

7.8 Debug ......................................... 45

7.9 Use Cases ....................................... 45

8 Poll Mode Driver 46

8.1 Requirements and Assumptions ........................... 46

8.2 Design Principles ................................... 47

8.3 Logical Cores, Memory and NIC Queues Relationships .............. 48

8.4 Device Identification, Ownership and Configuration ................ 48

8.5 Poll Mode Driver API ................................. 52

9 Generic flow API (rte_flow) 58

9.1 Overview ........................................ 58

9.2 Flow rule ........................................ 58

9.3 Rules management .................................. 92

9.4 Isolated mode ..................................... 95

9.5 Verbose error reporting ................................ 96

9.6 Helpers ......................................... 97

9.7 Caveats ......................................... 97

9.8 PMD interface ..................................... 98

9.9 Device compatibility .................................. 98

9.10 Future evolutions ...................................100

10 Switch Representation within DPDK Applications 102

10.1 Introduction .......................................102

10.2 Port Representors ...................................103

10.3 Basic SR-IOV .....................................104

10.4 Controlled SR-IOV ...................................105

10.5 Flow API (rte_flow) ..................................108

10.6 Switching Examples ..................................113

11 Traffic Metering and Policing API 116

11.1 Overview ........................................116

11.2 Configuration steps ..................................116

11.3 Run-time processing .................................116

12 Traffic Management API 118

12.1 Overview ........................................118

12.2 Capability API .....................................118

12.3 Scheduling Algorithms ................................119

12.4 Traffic Shaping .....................................119

12.5 Congestion Management ...............................119

12.6 Packet Marking ....................................120

12.7 Steps to Setup the Hierarchy .............................120

13 Wireless Baseband Device Library 122

ii

13.1 Design Principles ...................................122

13.2 Device Management .................................122

13.3 Device Operation Capabilities ............................124

13.4 Operation Processing .................................126

13.5 Sample code ......................................133

14 Cryptography Device Library 135

14.1 Design Principles ...................................135

14.2 Device Management .................................135

14.3 Device Features and Capabilities ..........................137

14.4 Operation Processing .................................139

14.5 Symmetric Cryptography Support ..........................141

14.6 Sample code ......................................145

14.7 Asymmetric Cryptography ..............................148

14.8 Asymmetric crypto Sample code ...........................149

15 Compression Device Library 153

15.1 Device Management .................................153

15.2 Device Features and Capabilities ..........................154

15.3 Compression Operation ................................155

15.4 Transforms .......................................157

15.5 Compression API Hash support ...........................157

15.6 Compression API Stateless operation ........................157

15.7 Compression API Stateful operation .........................160

15.8 Burst in compression API ...............................163

15.9 Sample code ......................................164

16 Security Library 165

16.1 Design Principles ...................................165

16.2 Device Features and Capabilities ..........................169

17 Rawdevice Library 175

17.1 Introduction .......................................175

17.2 Design .........................................175

18 Link Bonding Poll Mode Driver Library 177

18.1 Link Bonding Modes Overview ............................177

18.2 Implementation Details ................................178

18.3 Using Link Bonding Devices .............................186

19 Timer Library 189

19.1 Implementation Details ................................189

19.2 Use Cases .......................................190

19.3 References .......................................190

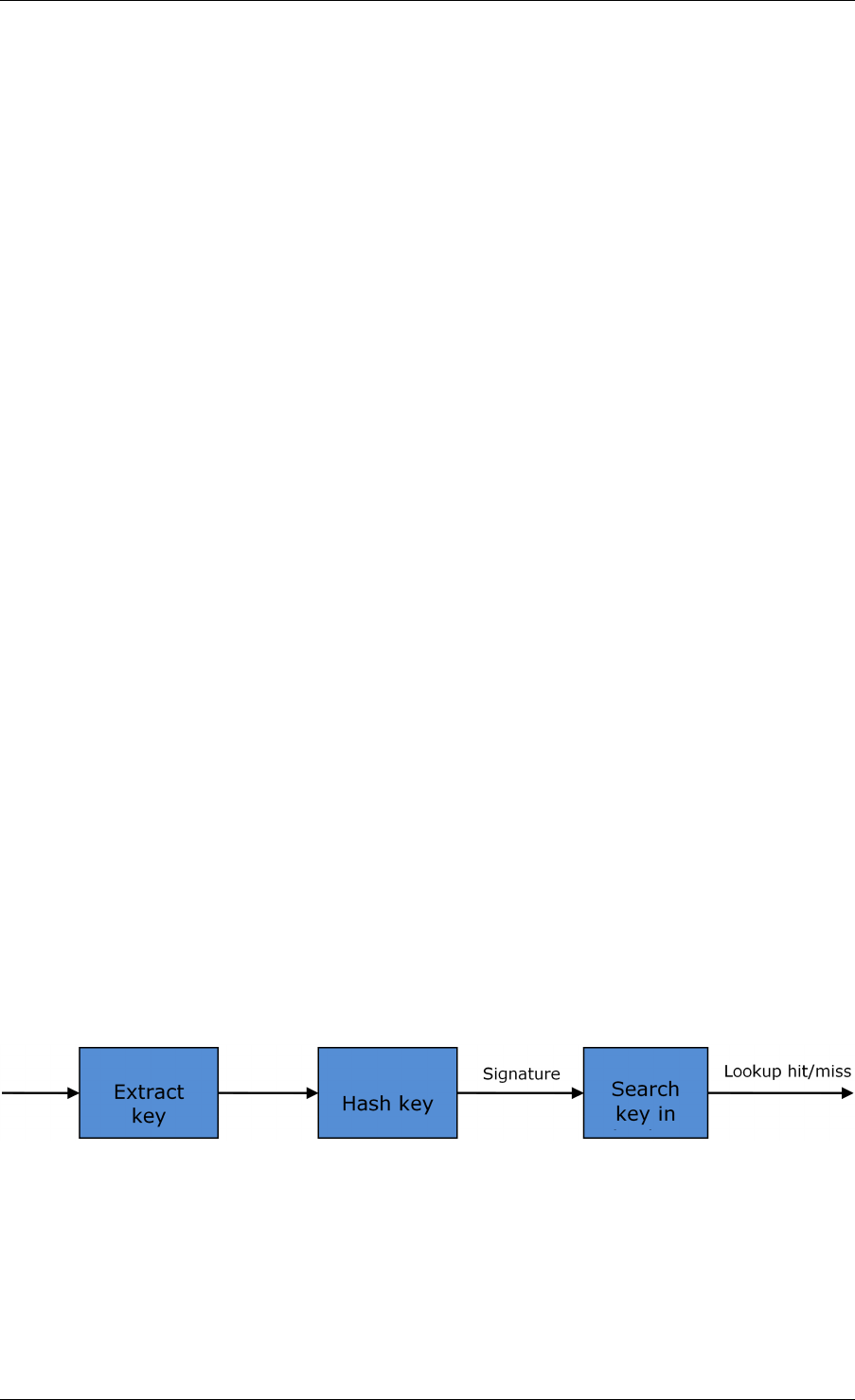

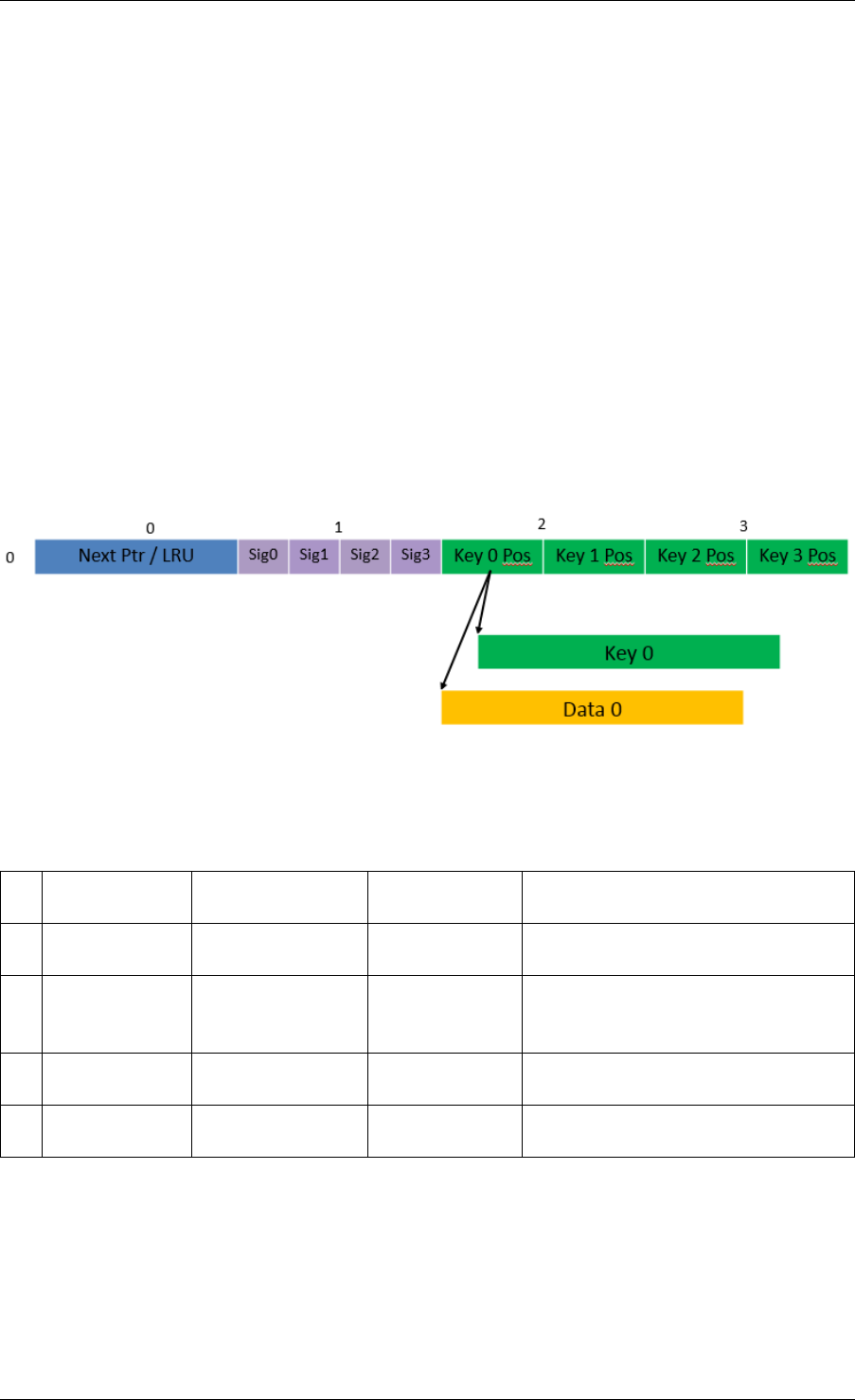

20 Hash Library 191

20.1 Hash API Overview ..................................191

20.2 Multi-process support .................................192

20.3 Multi-thread support ..................................192

20.4 Extendable Bucket Functionality support ......................193

20.5 Implementation Details (non Extendable Bucket Case) ..............193

20.6 Implementation Details (with Extendable Bucket) ..................194

20.7 Entry distribution in hash table ............................195

iii

20.8 Use Case: Flow Classification ............................196

20.9 References .......................................196

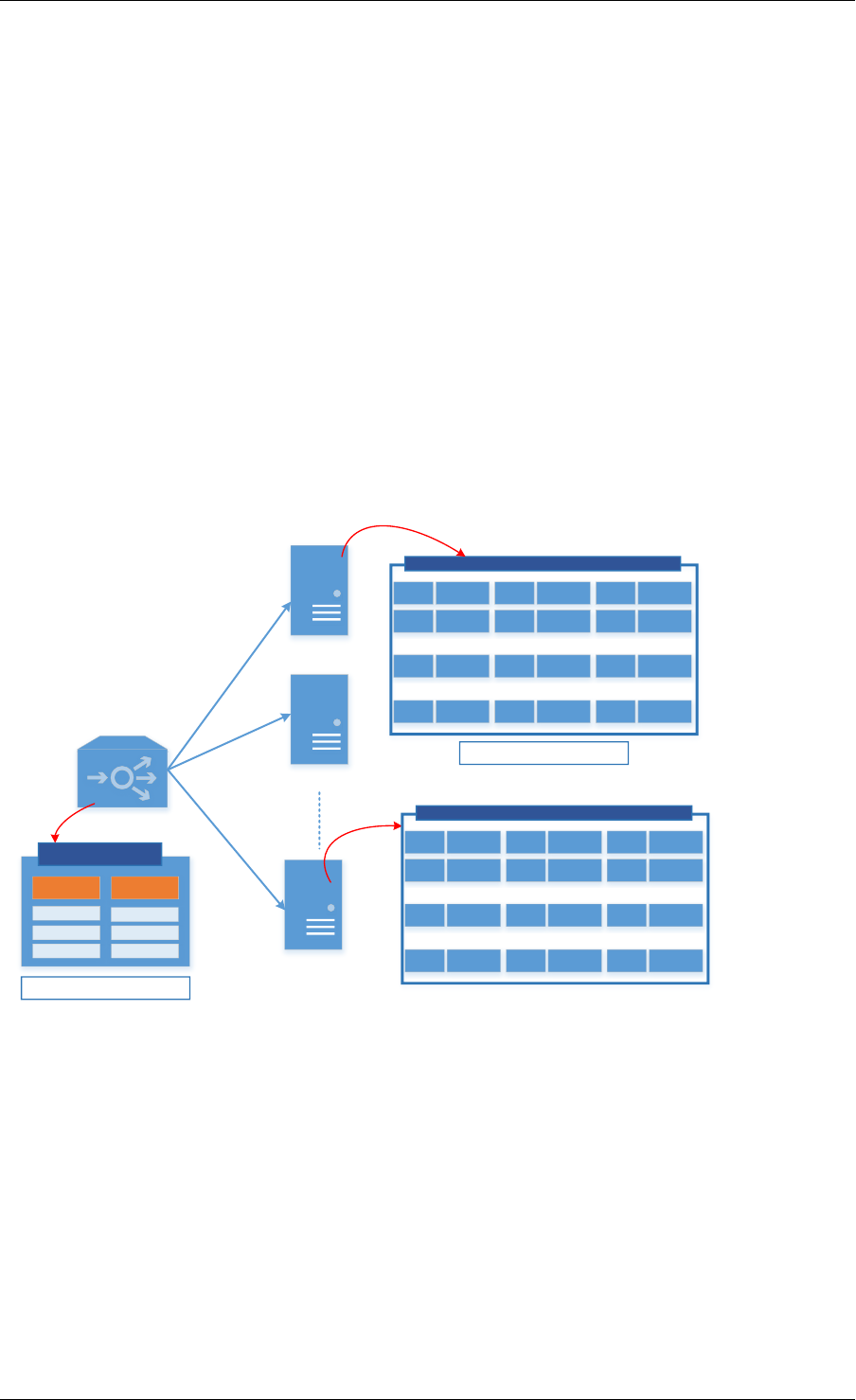

21 Elastic Flow Distributor Library 197

21.1 Introduction .......................................197

21.2 Flow Based Distribution ................................197

21.3 Example of EFD Library Usage ...........................201

21.4 Library API Overview .................................202

21.5 Library Internals ....................................203

21.6 References .......................................206

22 Membership Library 207

22.1 Introduction .......................................207

22.2 Vector of Bloom Filters ................................208

22.3 Hash-Table based Set-Summaries ..........................211

22.4 Library API Overview .................................213

22.5 References .......................................215

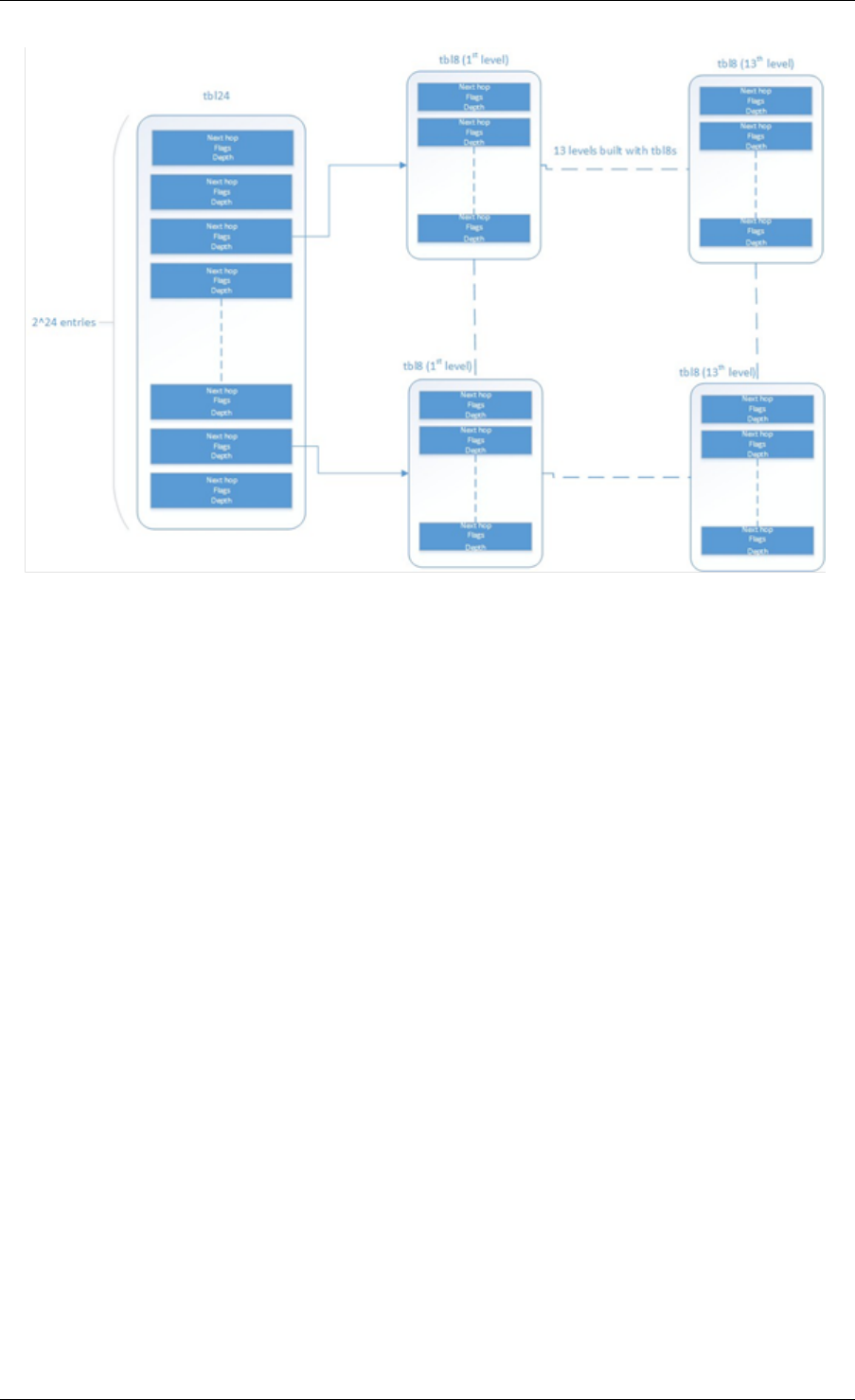

23 LPM Library 216

23.1 LPM API Overview ..................................216

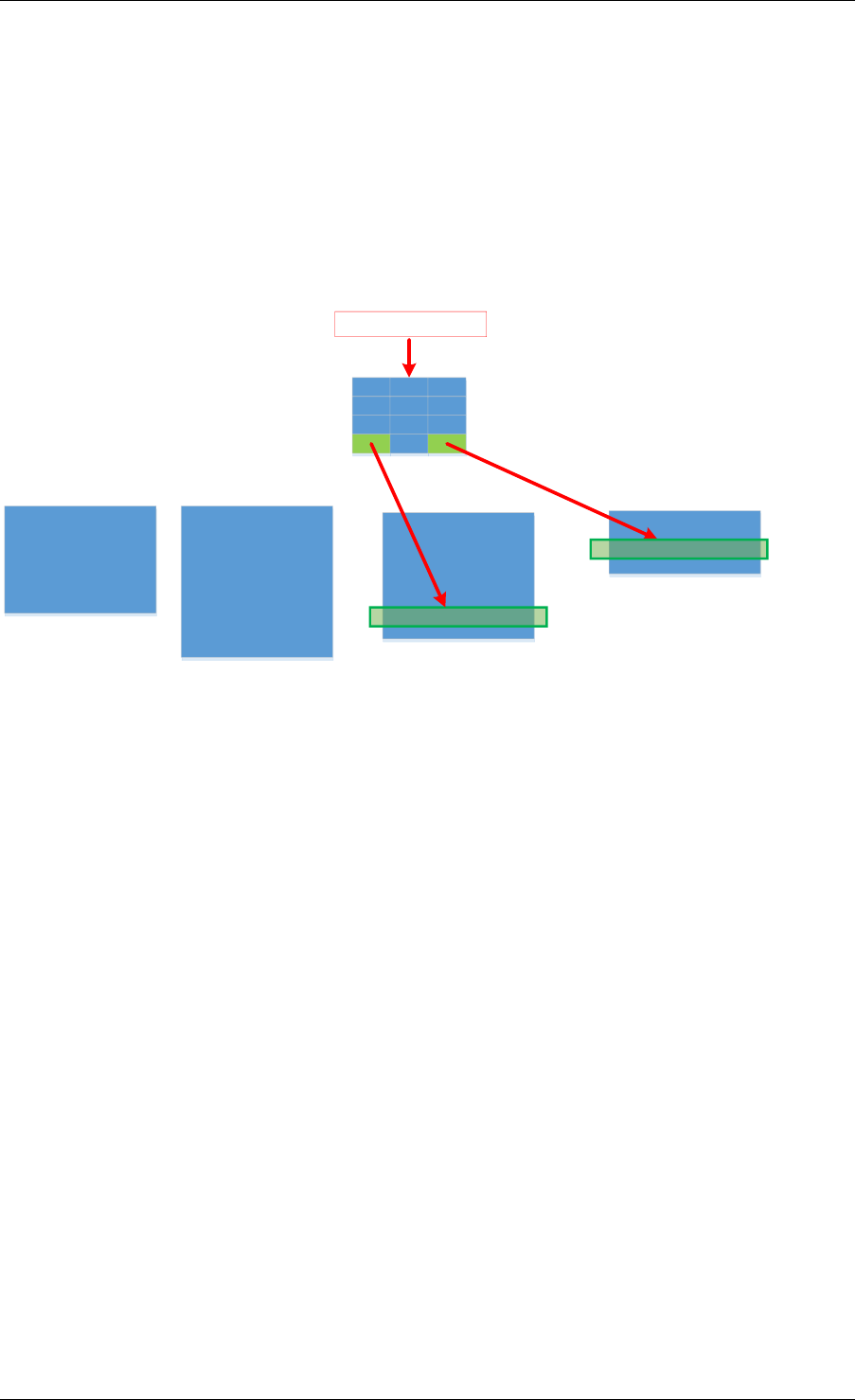

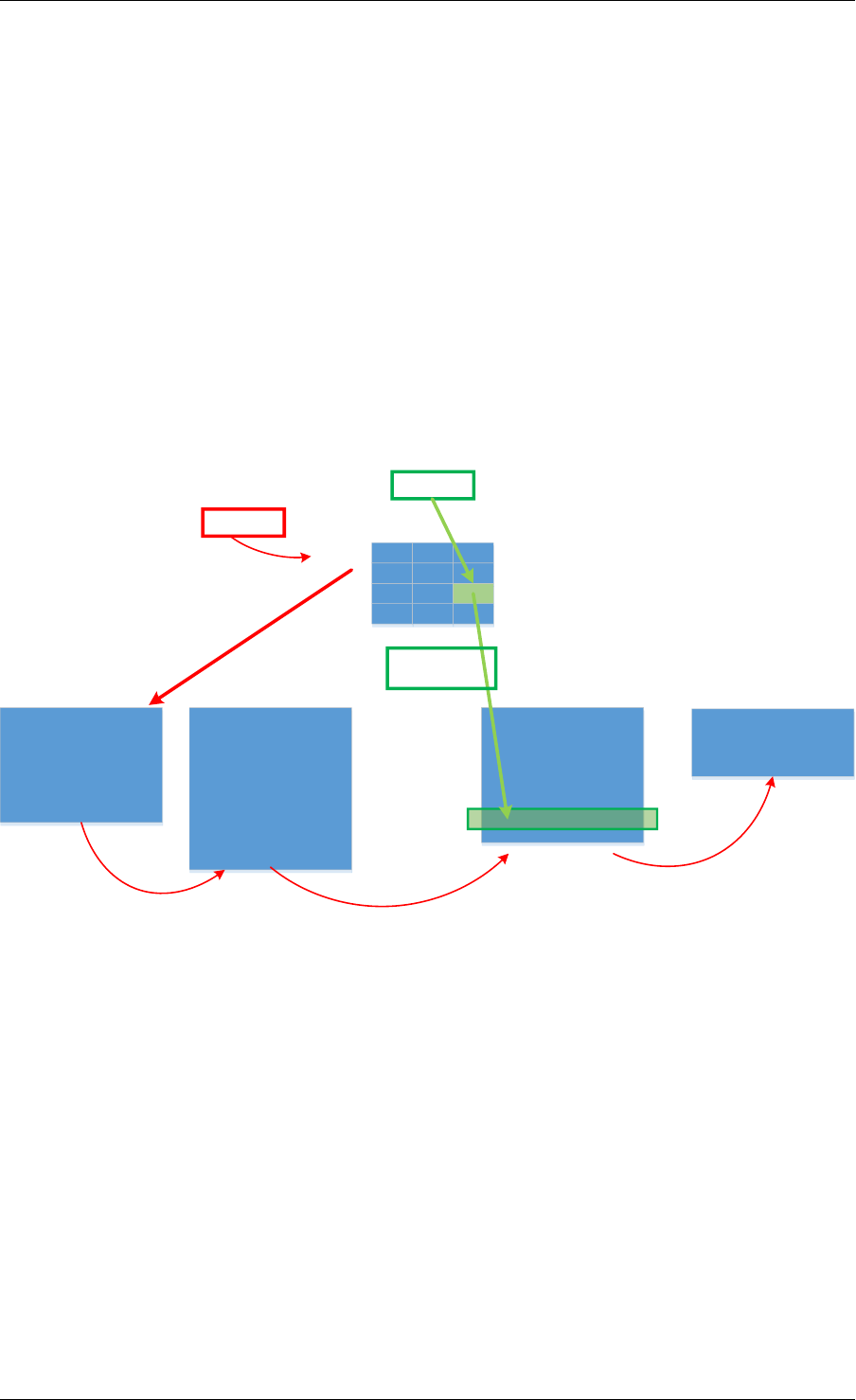

23.2 Implementation Details ................................216

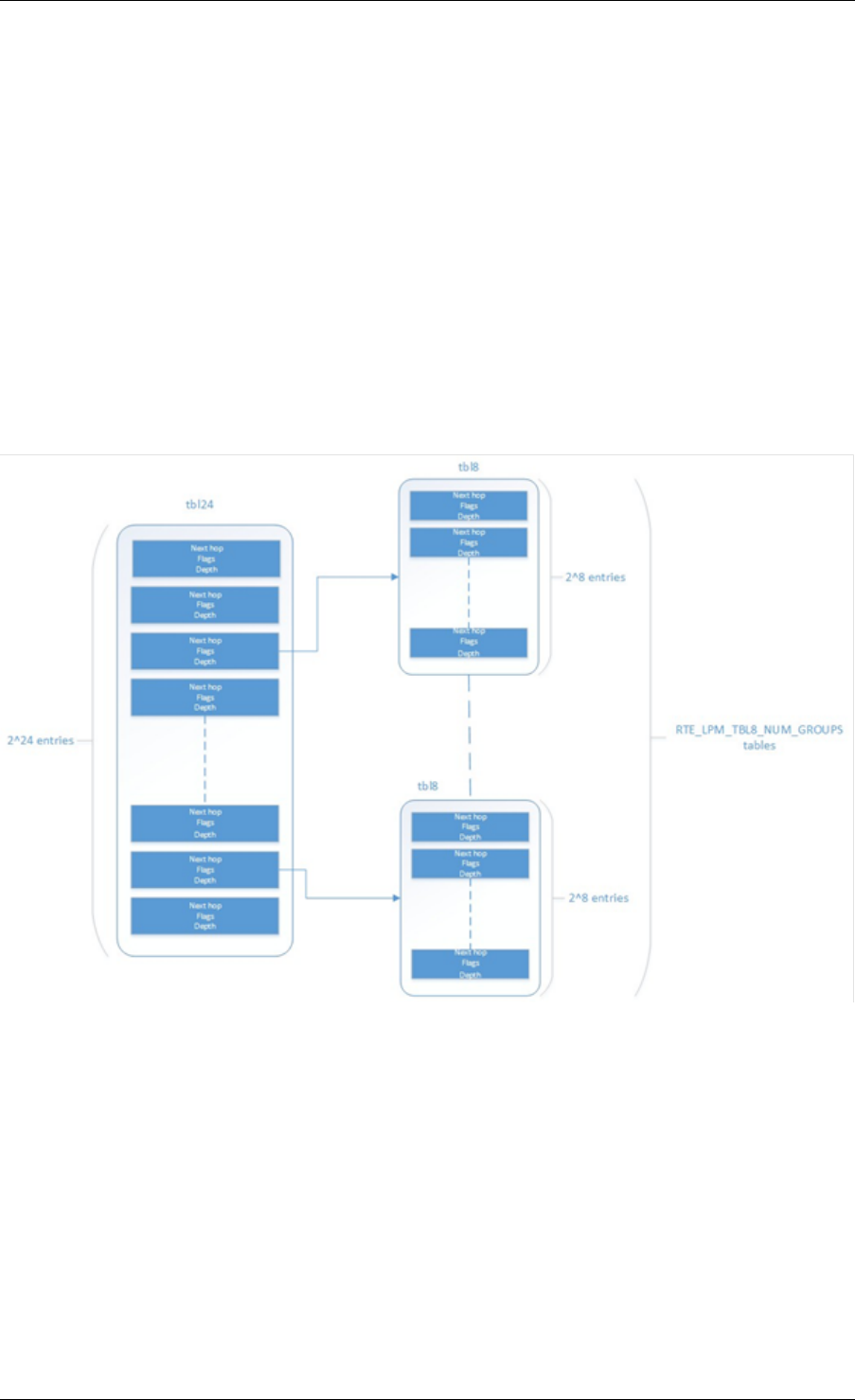

24 LPM6 Library 220

24.1 LPM6 API Overview ..................................220

24.2 Use Case: IPv6 Forwarding .............................224

25 Flow Classification Library 225

25.1 Overview ........................................225

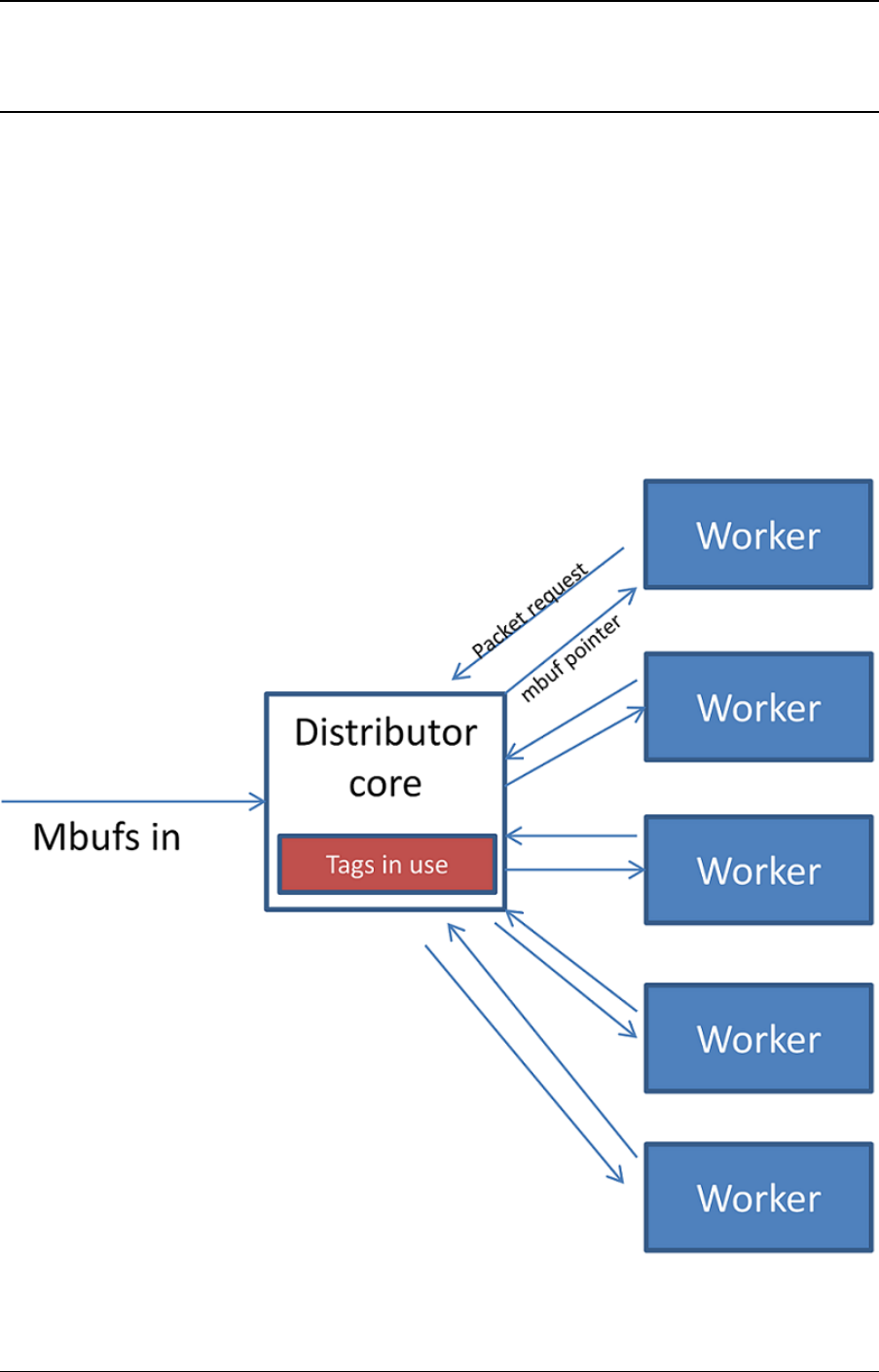

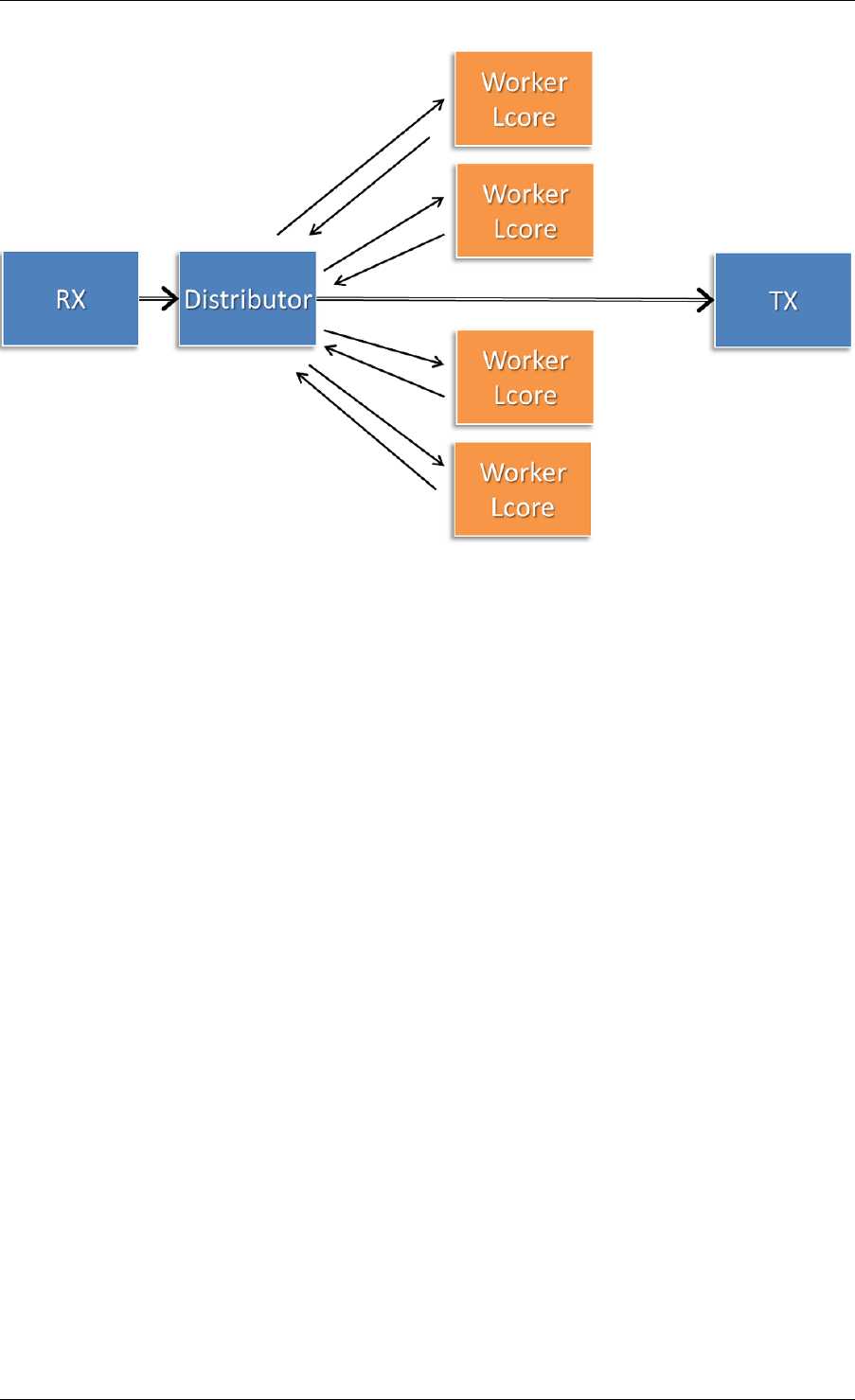

26 Packet Distributor Library 232

26.1 Distributor Core Operation ..............................233

26.2 Worker Operation ...................................234

27 Reorder Library 235

27.1 Operation ........................................235

27.2 Implementation Details ................................235

27.3 Use Case: Packet Distributor .............................236

28 IP Fragmentation and Reassembly Library 237

28.1 Packet fragmentation .................................237

28.2 Packet reassembly ...................................237

29 Generic Receive Offload Library 240

29.1 Overview ........................................240

29.2 Two Sets of API ....................................240

29.3 Reassembly Algorithm ................................241

29.4 TCP/IPv4 GRO ....................................242

29.5 VxLAN GRO ......................................242

30 Generic Segmentation Offload Library 244

30.1 Overview ........................................244

30.2 Limitations .......................................244

30.3 Packet Segmentation .................................245

iv

30.4 Supported GSO Packet Types ............................246

30.5 How to Segment a Packet ..............................247

31 The librte_pdump Library 249

31.1 Operation ........................................249

31.2 Implementation Details ................................250

31.3 Use Case: Packet Capturing .............................250

32 Multi-process Support 251

32.1 Memory Sharing ....................................251

32.2 Deployment Models ..................................253

32.3 Multi-process Limitations ...............................254

32.4 Communication between multiple processes ....................255

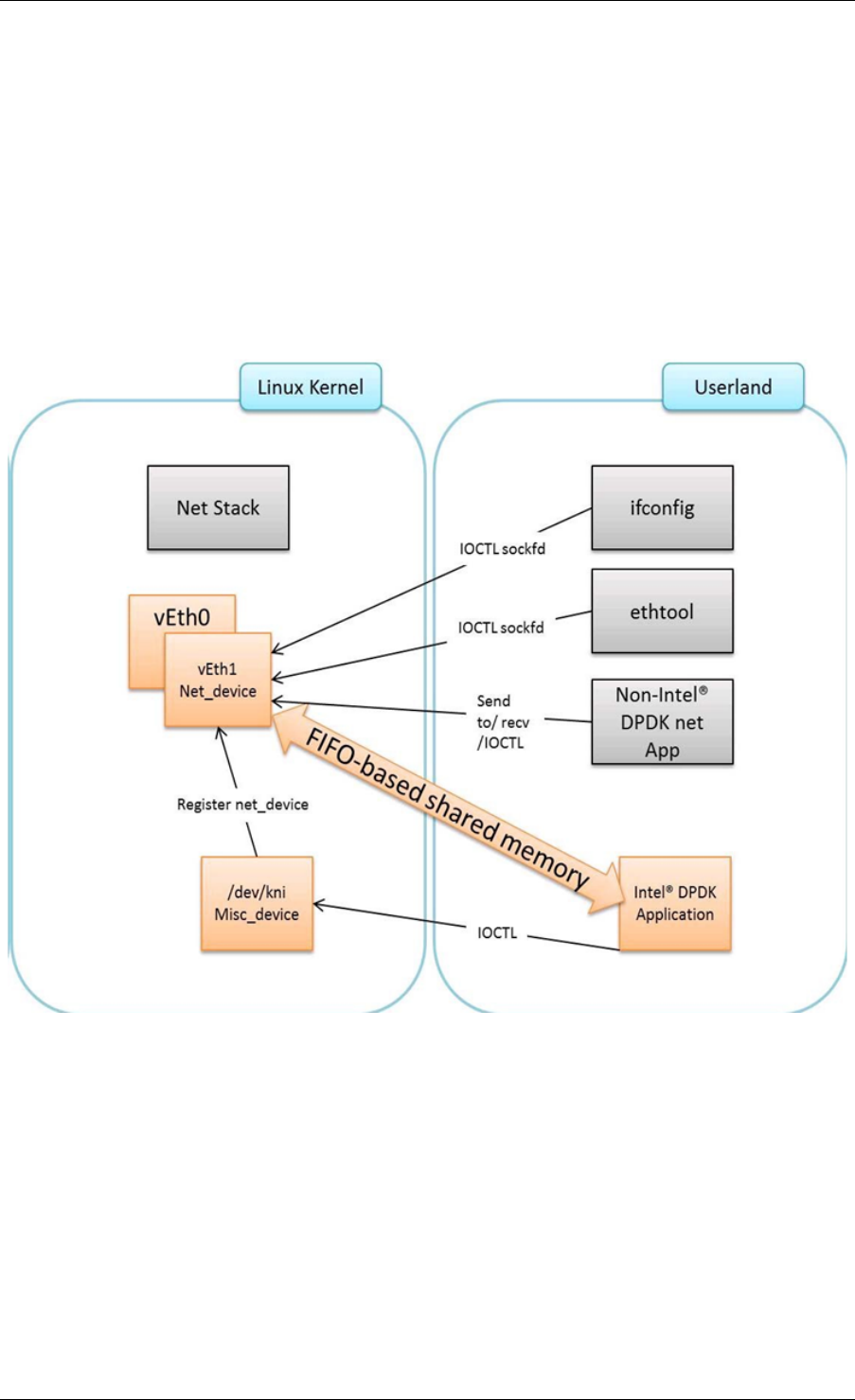

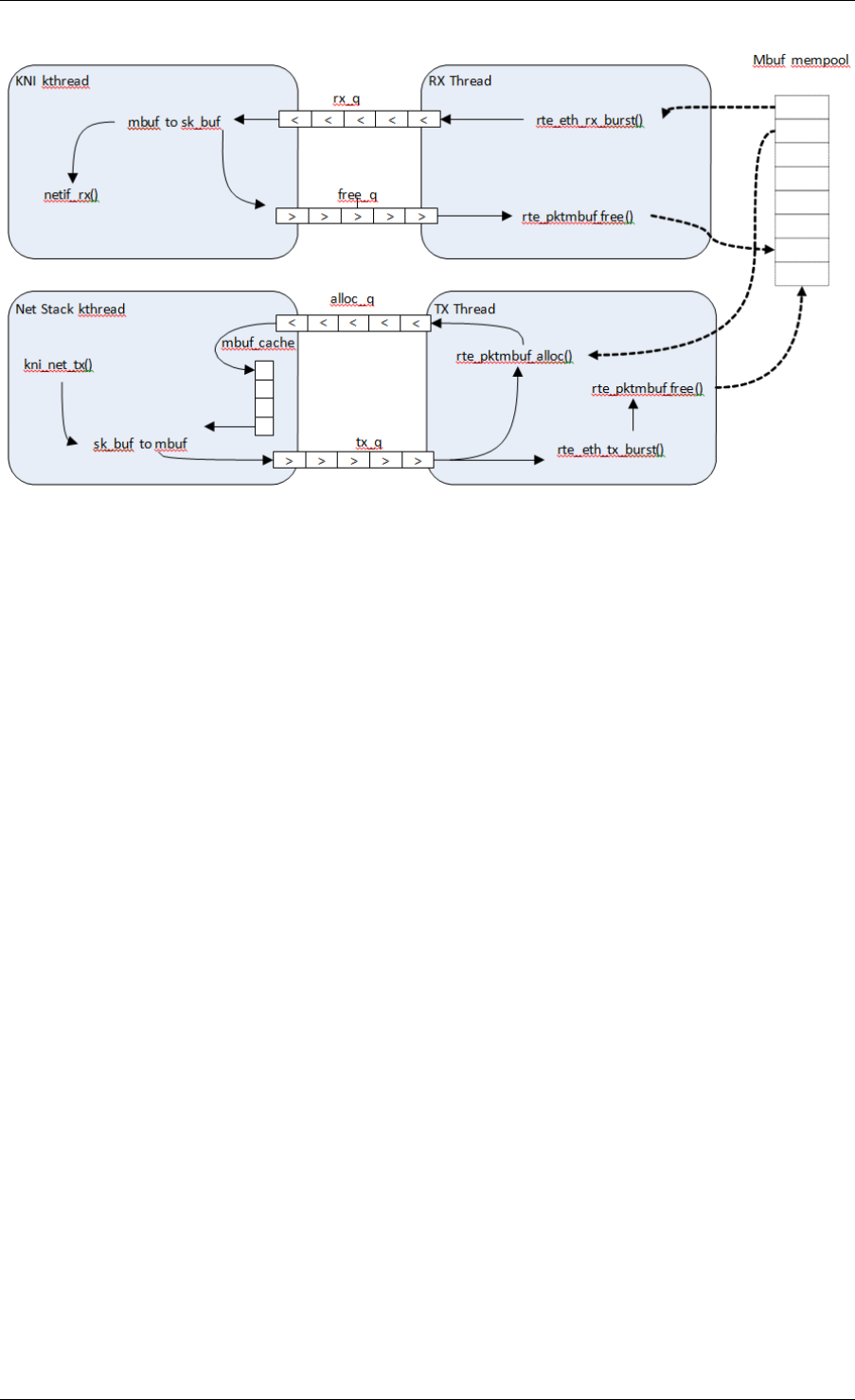

33 Kernel NIC Interface 258

33.1 The DPDK KNI Kernel Module ............................258

33.2 KNI Creation and Deletion ..............................261

33.3 DPDK mbuf Flow ...................................262

33.4 Use Case: Ingress ...................................262

33.5 Use Case: Egress ...................................263

33.6 Ethtool .........................................263

34 Thread Safety of DPDK Functions 264

34.1 Fast-Path APIs .....................................264

34.2 Performance Insensitive API .............................265

34.3 Library Initialization ..................................265

34.4 Interrupt Thread ....................................265

35 Event Device Library 266

35.1 Event struct ......................................266

35.2 API Walk-through ...................................268

35.3 Summary ........................................272

36 Event Ethernet Rx Adapter Library 273

36.1 API Walk-through ...................................273

37 Event Ethernet Tx Adapter Library 277

37.1 API Walk-through ...................................277

38 Event Timer Adapter Library 280

38.1 Event Timer struct ...................................280

38.2 API Overview .....................................281

38.3 Processing Timer Expiry Events ...........................284

38.4 Summary ........................................284

39 Event Crypto Adapter Library 285

39.1 Adapter Mode .....................................285

39.2 API Overview .....................................286

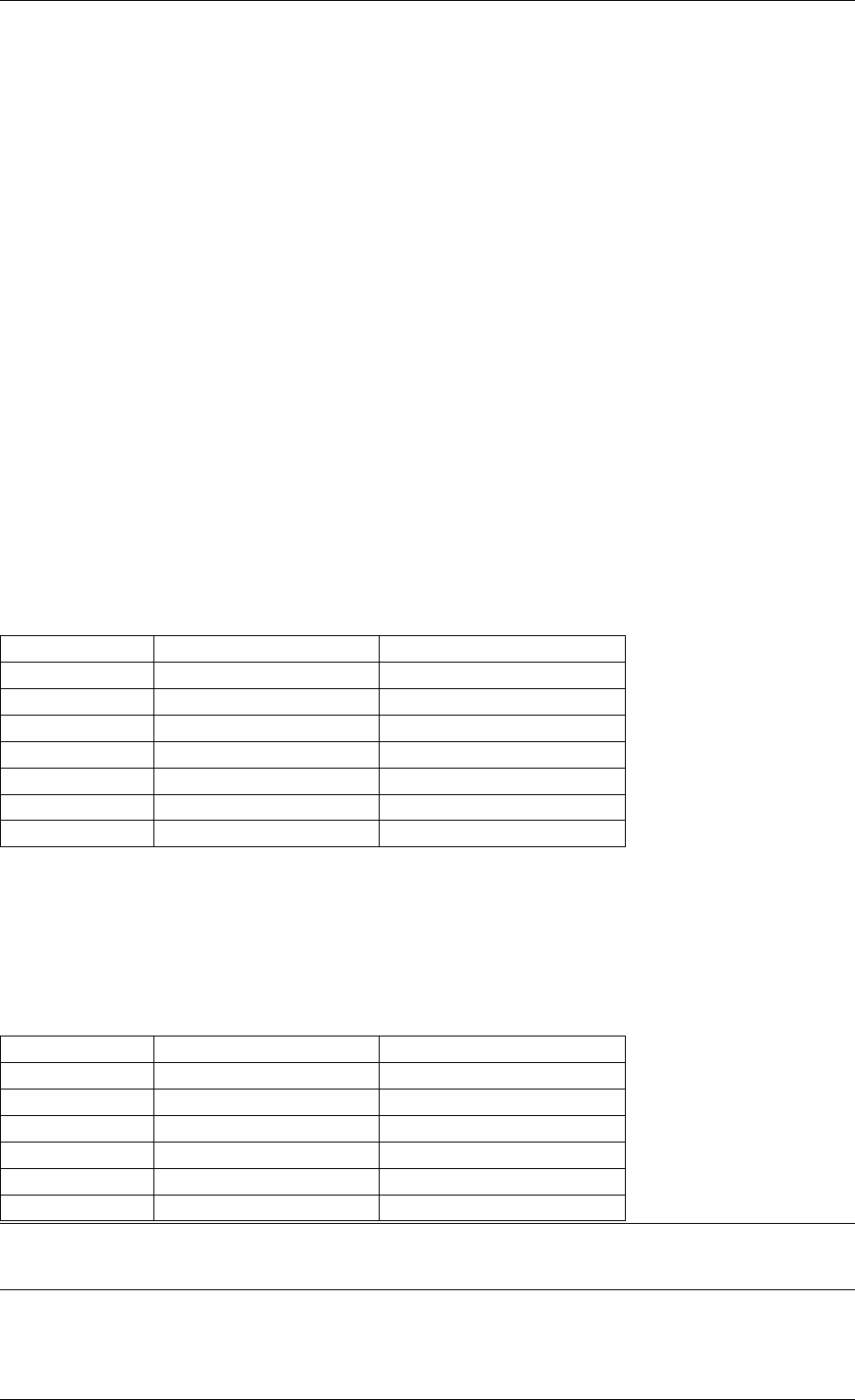

40 Quality of Service (QoS) Framework 291

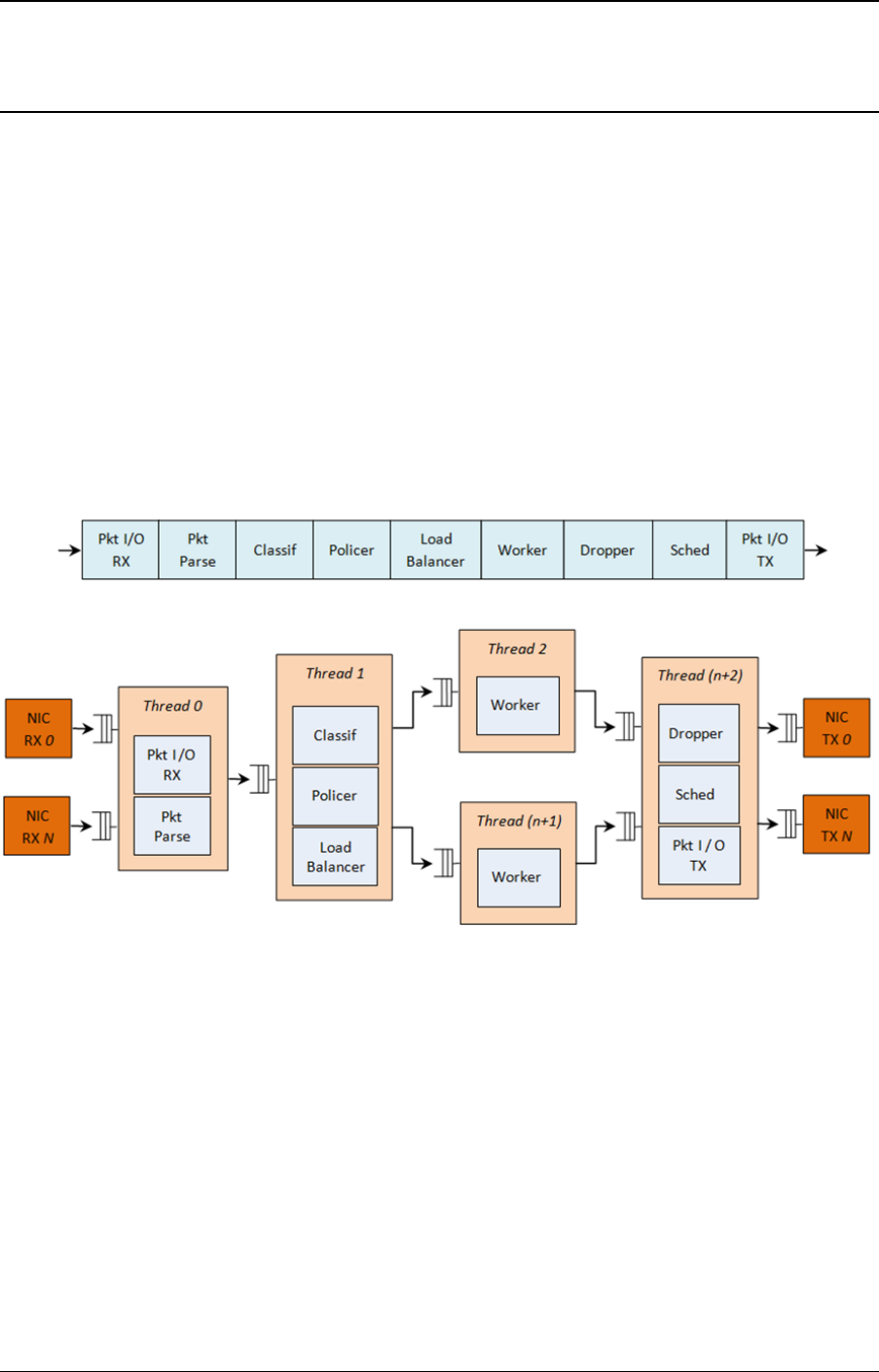

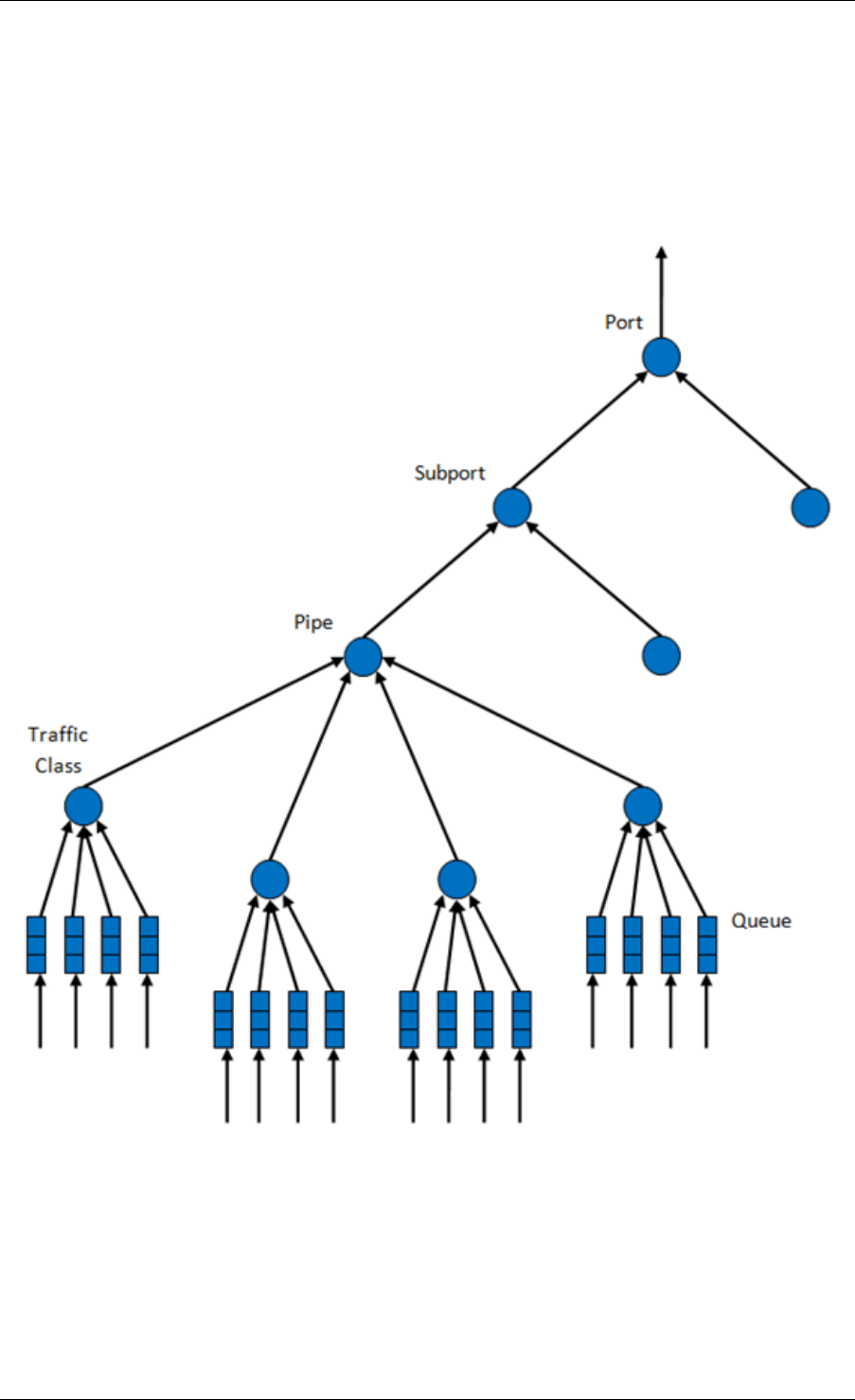

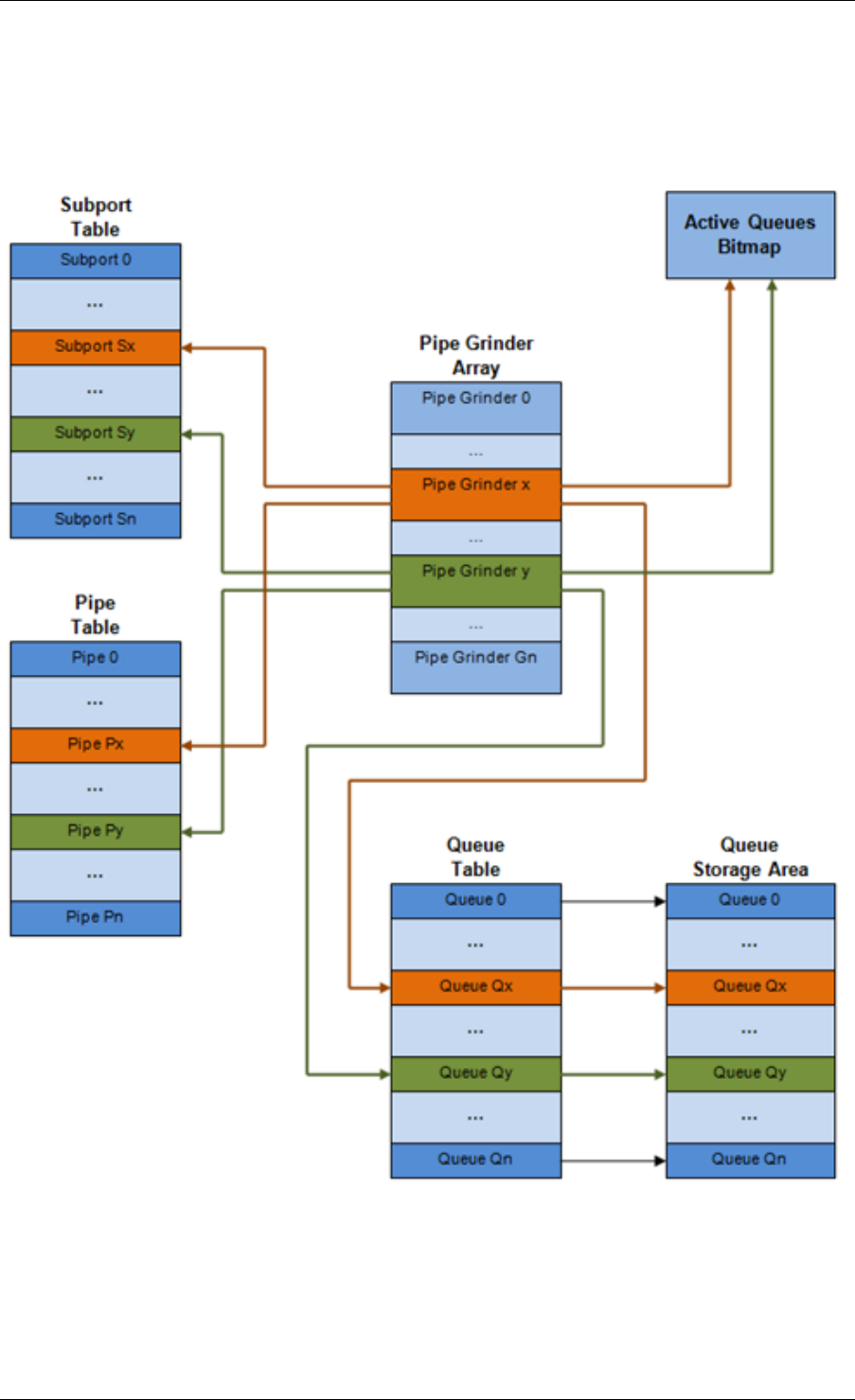

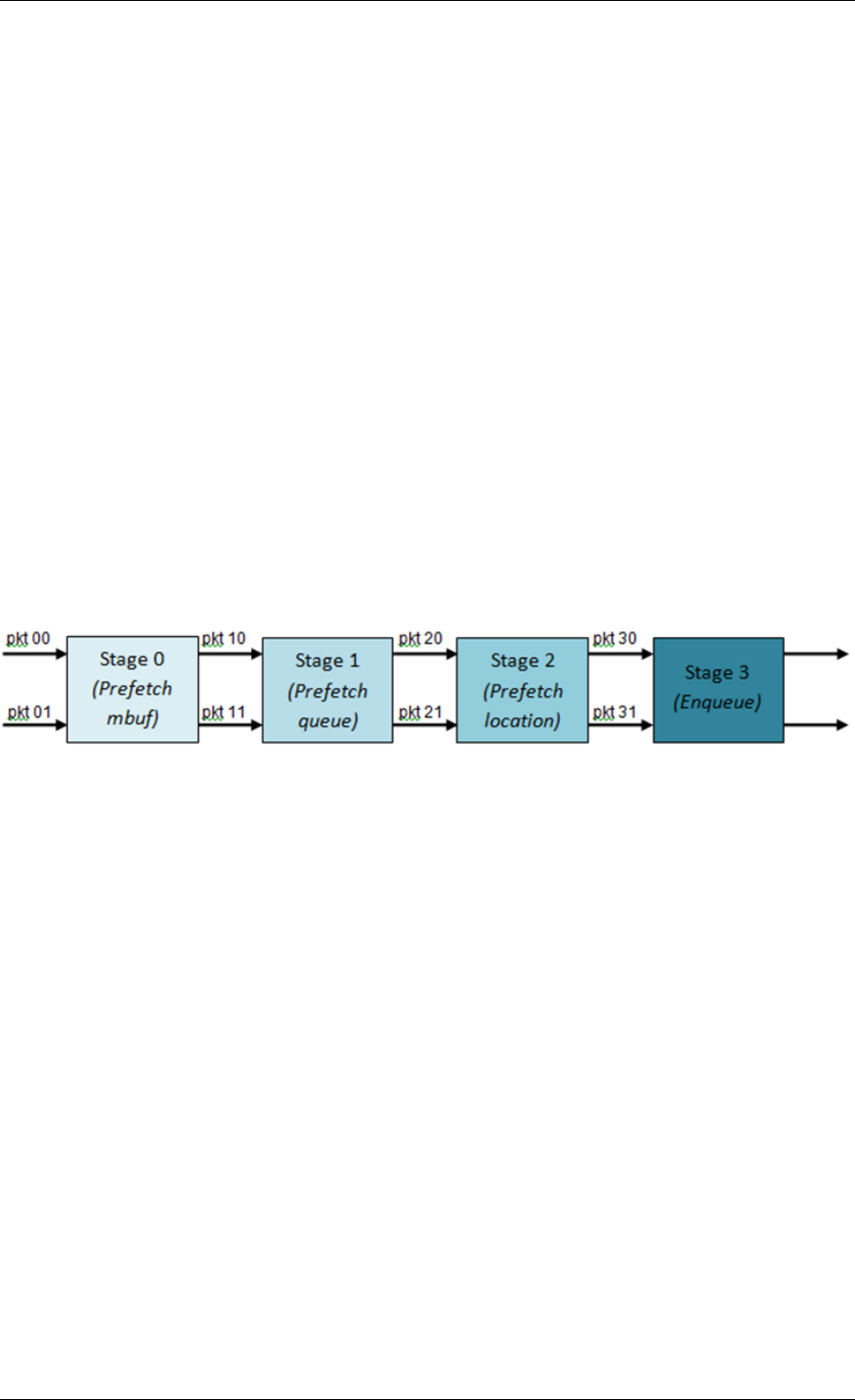

40.1 Packet Pipeline with QoS Support ..........................291

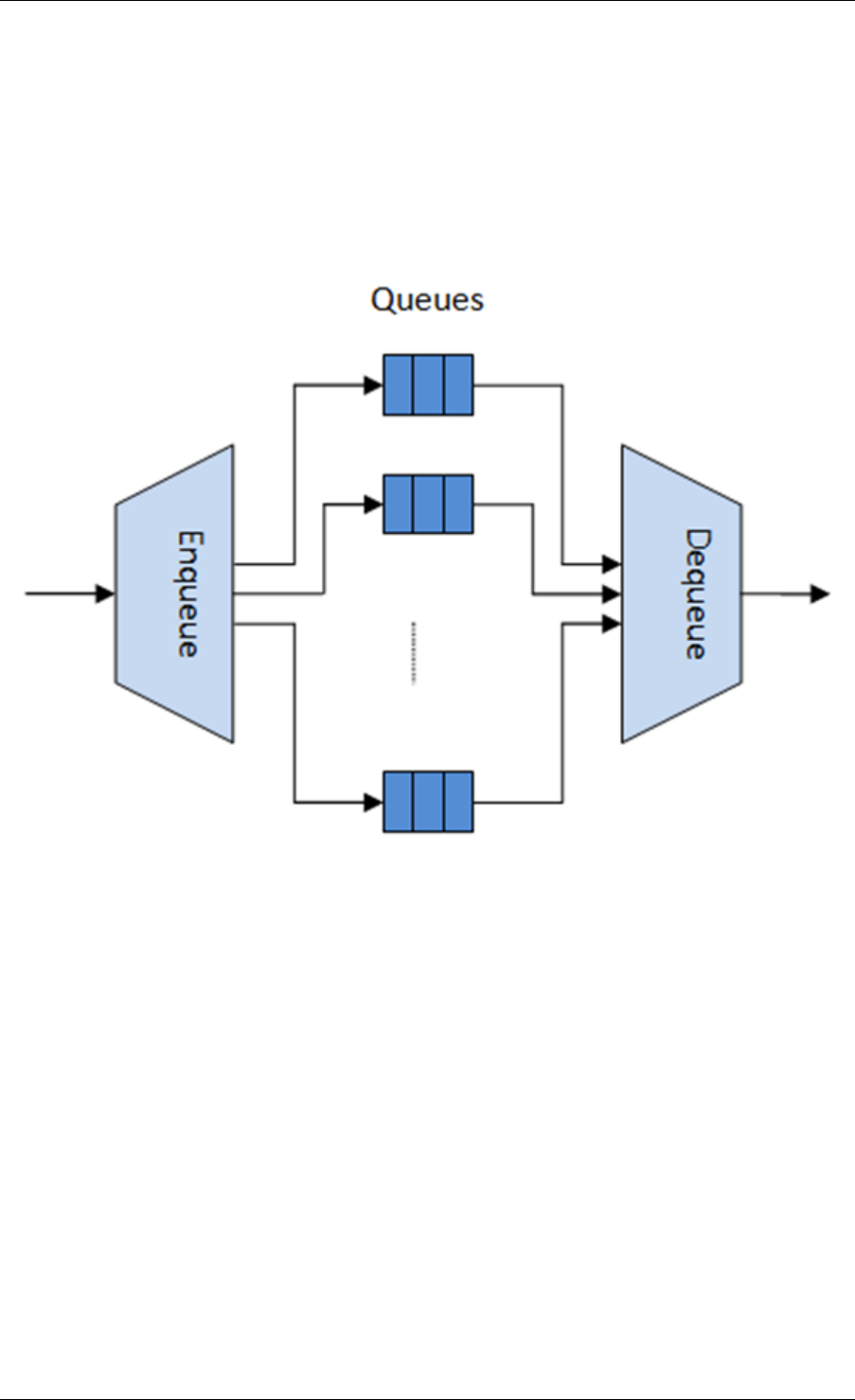

40.2 Hierarchical Scheduler ................................292

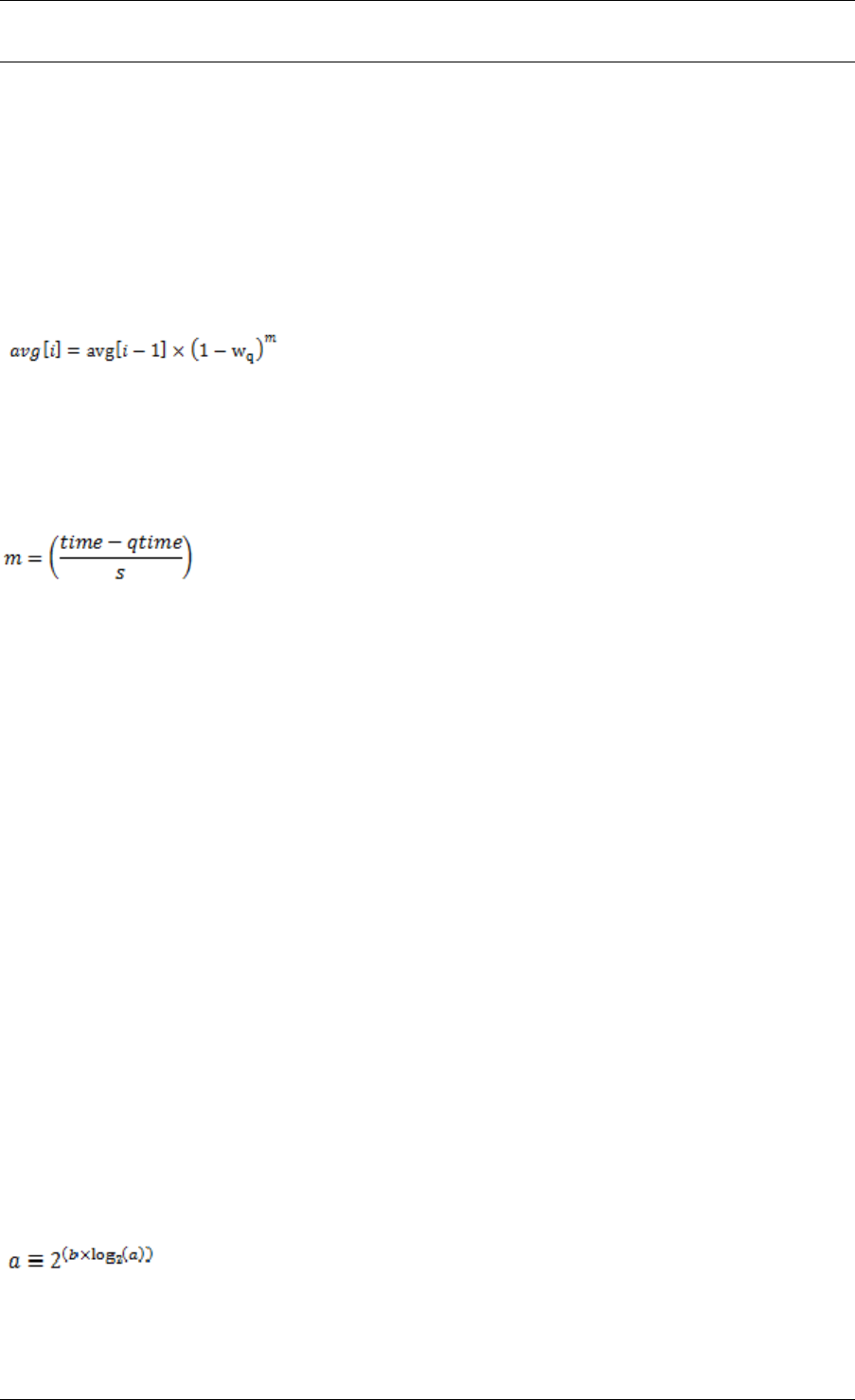

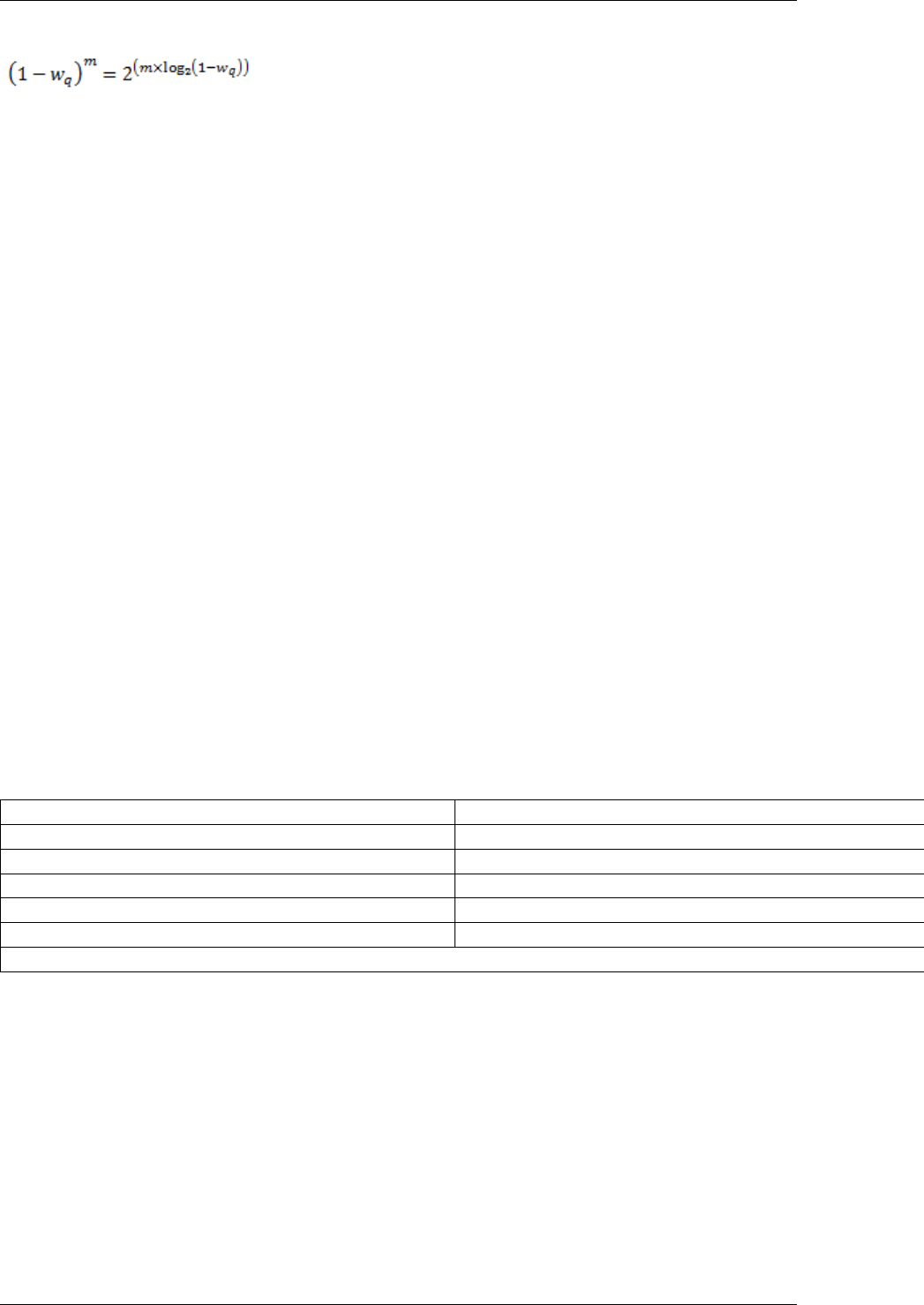

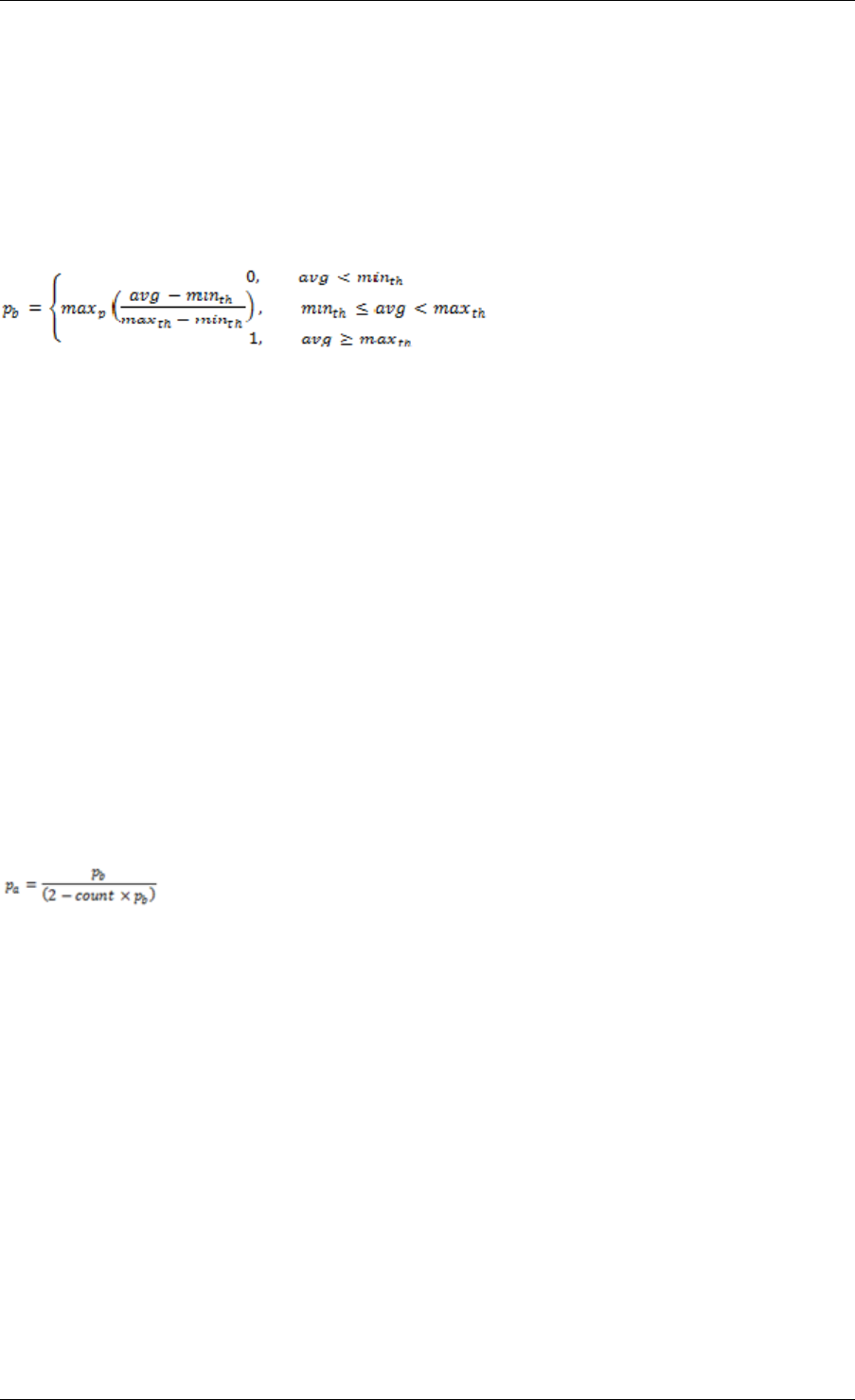

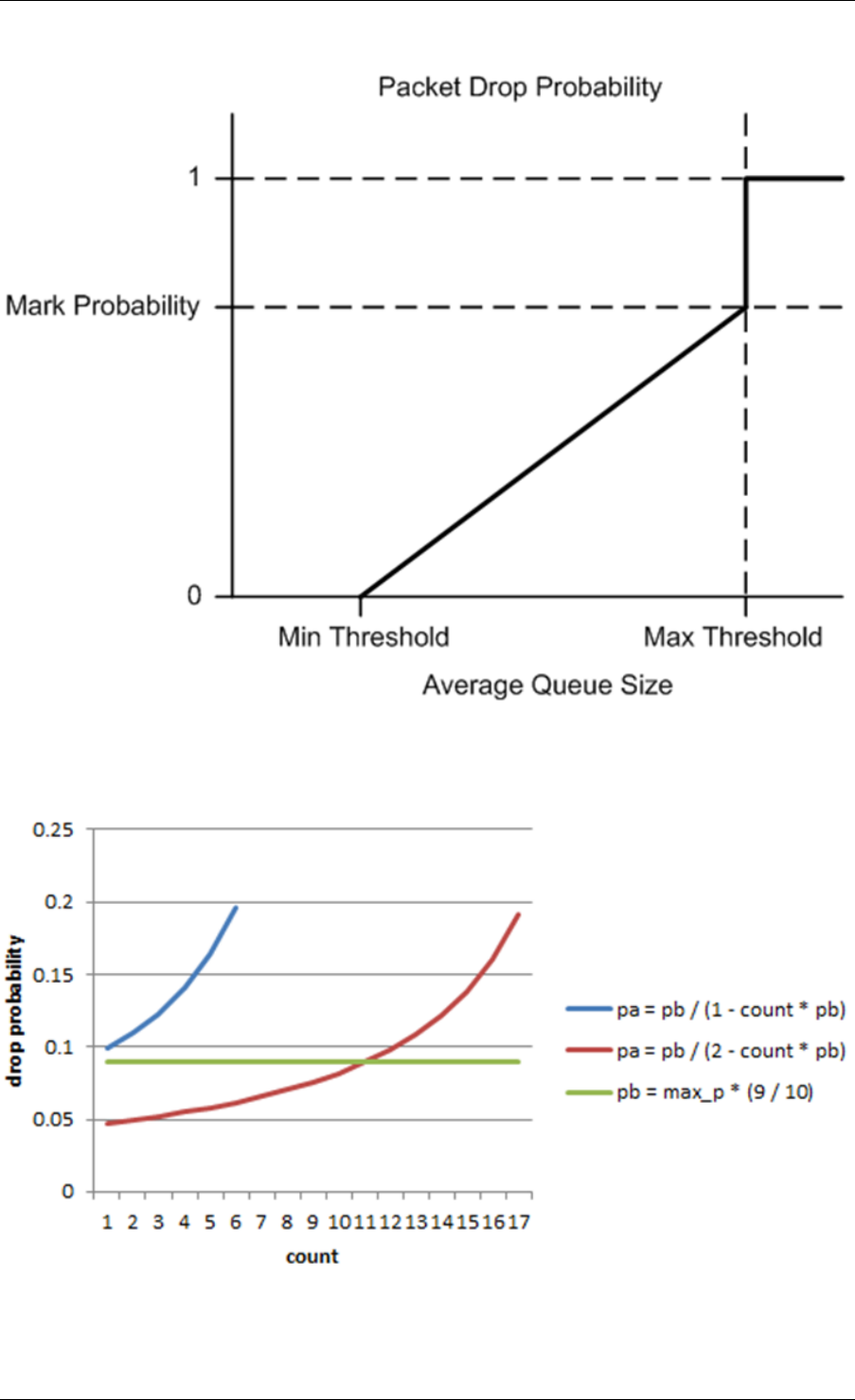

40.3 Dropper .........................................315

v

40.4 Traffic Metering ....................................324

41 Power Management 326

41.1 CPU Frequency Scaling ................................326

41.2 Core-load Throttling through C-States ........................327

41.3 Per-core Turbo Boost .................................327

41.4 Use of Power Library in a Hyper-Threaded Environment ..............327

41.5 API Overview of the Power Library ..........................327

41.6 User Cases .......................................328

41.7 Empty Poll API .....................................328

41.8 User Cases .......................................329

41.9 References .......................................329

42 Packet Classification and Access Control 330

42.1 Overview ........................................330

42.2 Application Programming Interface (API) Usage ..................336

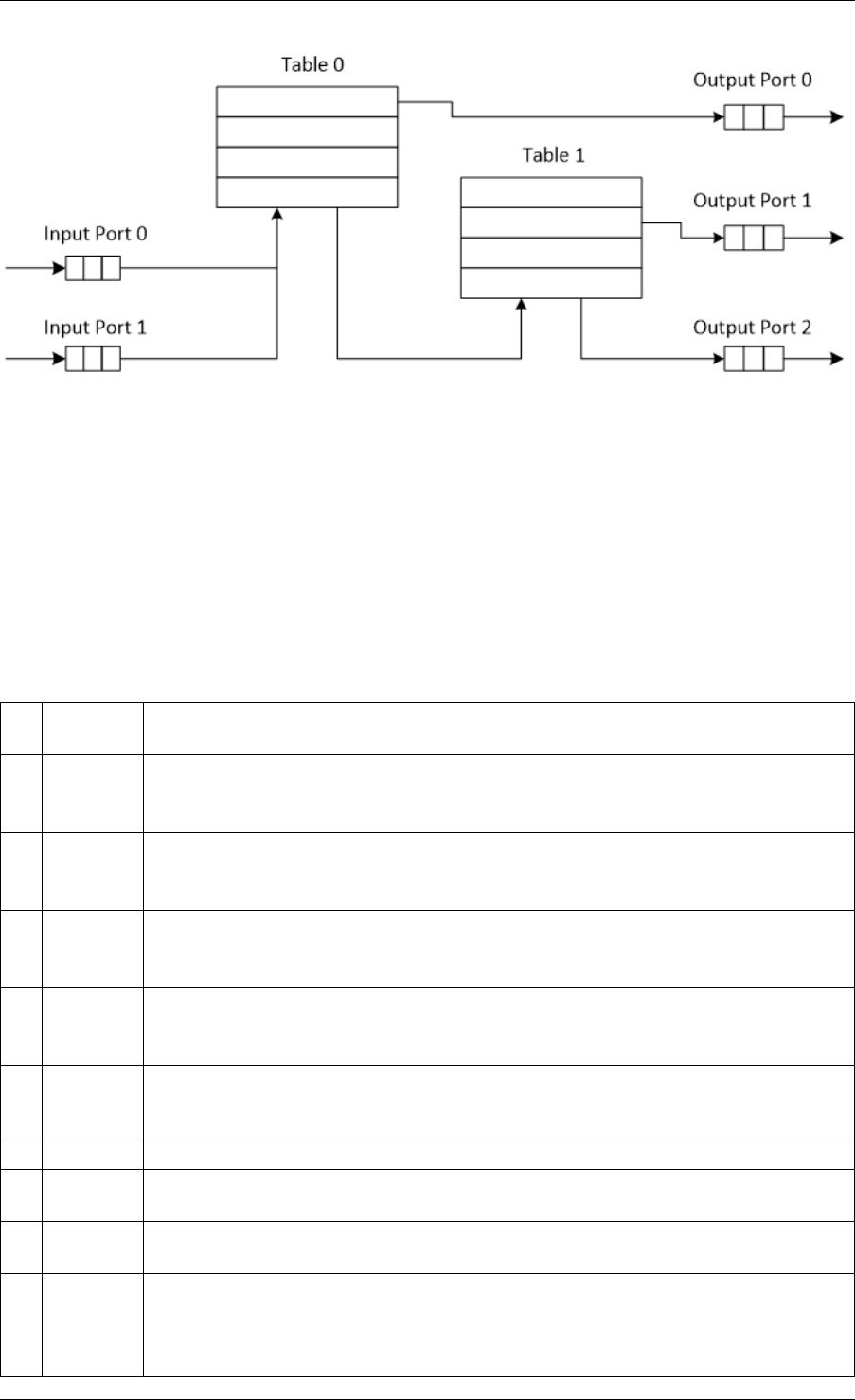

43 Packet Framework 339

43.1 Design Objectives ...................................339

43.2 Overview ........................................339

43.3 Port Library Design ..................................340

43.4 Table Library Design ..................................341

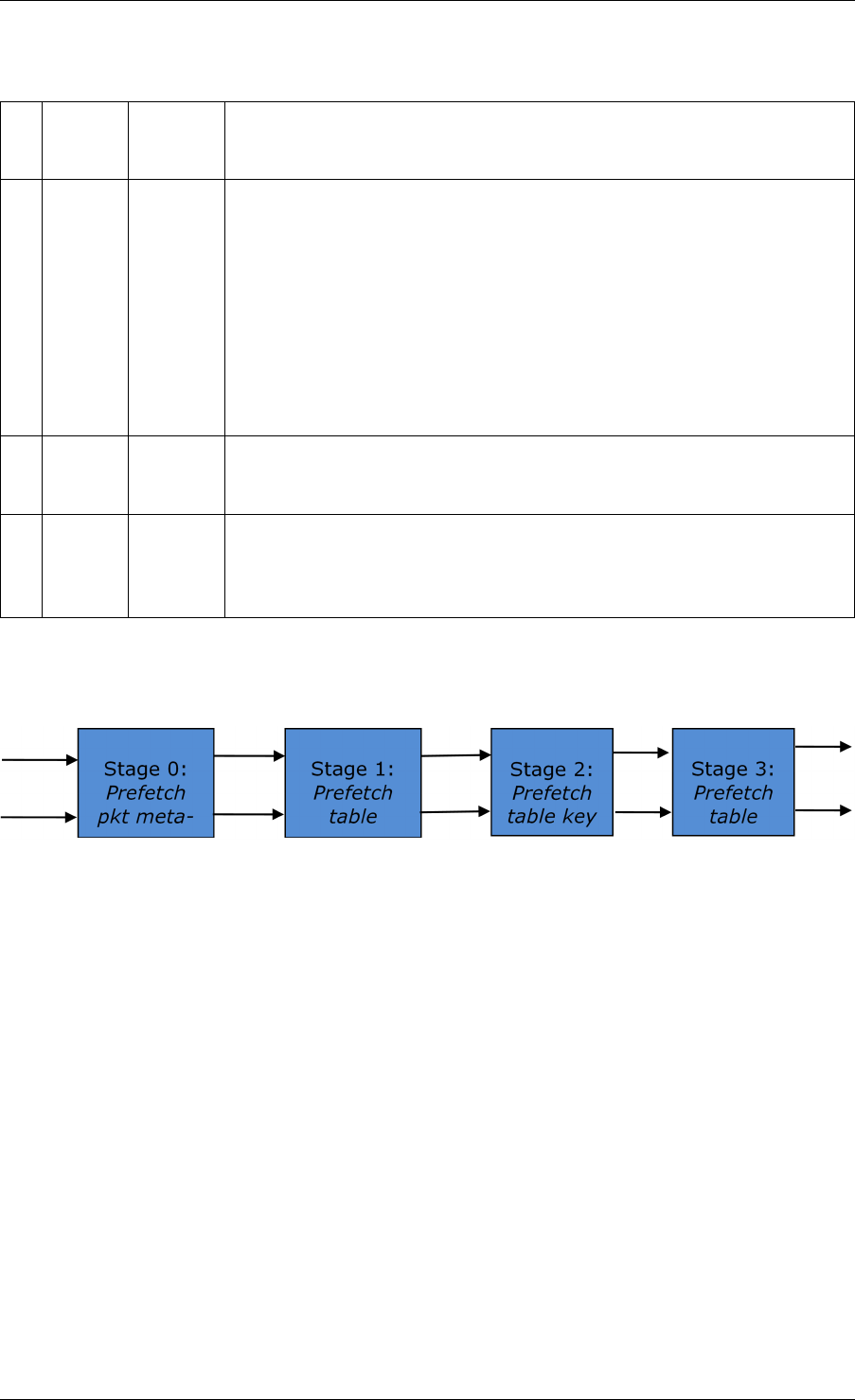

43.5 Pipeline Library Design ................................355

43.6 Multicore Scaling ...................................357

43.7 Interfacing with Accelerators .............................358

44 Vhost Library 359

44.1 Vhost API Overview ..................................359

44.2 Vhost-user Implementations .............................362

44.3 Guest memory requirement ..............................363

44.4 Vhost supported vSwitch reference .........................363

44.5 Vhost data path acceleration (vDPA) .........................363

45 Metrics Library 365

45.1 Initialising the library ..................................365

45.2 Registering metrics ..................................365

45.3 Updating metric values ................................366

45.4 Querying metrics ...................................366

45.5 Bit-rate statistics library ................................367

45.6 Latency statistics library ................................368

46 Berkeley Packet Filter Library 370

46.1 Not currently supported eBPF features .......................370

47 Source Organization 371

47.1 Makefiles and Config .................................371

47.2 Libraries ........................................371

47.3 Drivers .........................................372

47.4 Applications ......................................372

48 Development Kit Build System 374

48.1 Building the Development Kit Binary .........................374

48.2 Building External Applications ............................375

vi

48.3 Makefile Description ..................................376

49 Development Kit Root Makefile Help 381

49.1 Configuration Targets .................................381

49.2 Build Targets ......................................381

49.3 Install Targets .....................................382

49.4 Test Targets ......................................382

49.5 Documentation Targets ................................382

49.6 Misc Targets ......................................383

49.7 Other Useful Command-line Variables ........................383

49.8 Make in a Build Directory ...............................383

49.9 Compiling for Debug ..................................383

50 Extending the DPDK 384

50.1 Example: Adding a New Library libfoo ........................384

51 Building Your Own Application 386

51.1 Compiling a Sample Application in the Development Kit Directory ........386

51.2 Build Your Own Application Outside the Development Kit .............386

51.3 Customizing Makefiles ................................386

52 External Application/Library Makefile help 388

52.1 Prerequisites ......................................388

52.2 Build Targets ......................................388

52.3 Help Targets ......................................388

52.4 Other Useful Command-line Variables ........................389

52.5 Make from Another Directory .............................389

53 Performance Optimization Guidelines 390

53.1 Introduction .......................................390

54 Writing Efficient Code 391

54.1 Memory .........................................391

54.2 Communication Between lcores ...........................392

54.3 PMD Driver .......................................393

54.4 Locks and Atomic Operations ............................394

54.5 Coding Considerations ................................394

54.6 Setting the Target CPU Type .............................394

55 Profile Your Application 395

55.1 Profiling on x86 ....................................395

55.2 Profiling on ARM64 ..................................395

56 Glossary 397

vii

CHAPTER

ONE

INTRODUCTION

This document provides software architecture information, development environment informa-

tion and optimization guidelines.

For programming examples and for instructions on compiling and running each sample appli-

cation, see the DPDK Sample Applications User Guide for details.

For general information on compiling and running applications, see the DPDK Getting Started

Guide.

1.1 Documentation Roadmap

The following is a list of DPDK documents in the suggested reading order:

•Release Notes : Provides release-specific information, including supported features,

limitations, fixed issues, known issues and so on. Also, provides the answers to frequently

asked questions in FAQ format.

•Getting Started Guide : Describes how to install and configure the DPDK software;

designed to get users up and running quickly with the software.

•FreeBSD* Getting Started Guide : A document describing the use of the DPDK with

FreeBSD* has been added in DPDK Release 1.6.0. Refer to this guide for installation

and configuration instructions to get started using the DPDK with FreeBSD*.

•Programmer’s Guide (this document): Describes:

–The software architecture and how to use it (through examples), specifically in a

Linux* application (linuxapp) environment

–The content of the DPDK, the build system (including the commands that can be

used in the root DPDK Makefile to build the development kit and an application) and

guidelines for porting an application

–Optimizations used in the software and those that should be considered for new

development

A glossary of terms is also provided.

•API Reference : Provides detailed information about DPDK functions, data structures

and other programming constructs.

•Sample Applications User Guide: Describes a set of sample applications. Each chap-

ter describes a sample application that showcases specific functionality and provides

instructions on how to compile, run and use the sample application.

1

Programmer’s Guide, Release 18.11.0-rc5

1.2 Related Publications

The following documents provide information that is relevant to the development of applications

using the DPDK:

• Intel® 64 and IA-32 Architectures Software Developer’s Manual Volume 3A: System Pro-

gramming Guide

Part 1: Architecture Overview

1.2. Related Publications 2

CHAPTER

TWO

OVERVIEW

This section gives a global overview of the architecture of Data Plane Development Kit (DPDK).

The main goal of the DPDK is to provide a simple, complete framework for fast packet process-

ing in data plane applications. Users may use the code to understand some of the techniques

employed, to build upon for prototyping or to add their own protocol stacks. Alternative ecosys-

tem options that use the DPDK are available.

The framework creates a set of libraries for specific environments through the creation of an

Environment Abstraction Layer (EAL), which may be specific to a mode of the Intel® architec-

ture (32-bit or 64-bit), Linux* user space compilers or a specific platform. These environments

are created through the use of make files and configuration files. Once the EAL library is cre-

ated, the user may link with the library to create their own applications. Other libraries, outside

of EAL, including the Hash, Longest Prefix Match (LPM) and rings libraries are also provided.

Sample applications are provided to help show the user how to use various features of the

DPDK.

The DPDK implements a run to completion model for packet processing, where all resources

must be allocated prior to calling Data Plane applications, running as execution units on logical

processing cores. The model does not support a scheduler and all devices are accessed by

polling. The primary reason for not using interrupts is the performance overhead imposed by

interrupt processing.

In addition to the run-to-completion model, a pipeline model may also be used by passing

packets or messages between cores via the rings. This allows work to be performed in stages

and may allow more efficient use of code on cores.

2.1 Development Environment

The DPDK project installation requires Linux and the associated toolchain, such as one or more

compilers, assembler, make utility, editor and various libraries to create the DPDK components

and libraries.

Once these libraries are created for the specific environment and architecture, they may then

be used to create the user’s data plane application.

When creating applications for the Linux user space, the glibc library is used. For DPDK

applications, two environmental variables (RTE_SDK and RTE_TARGET) must be configured

before compiling the applications. The following are examples of how the variables can be set:

export RTE_SDK=/home/user/DPDK

export RTE_TARGET=x86_64-native-linuxapp-gcc

3

Programmer’s Guide, Release 18.11.0-rc5

See the DPDK Getting Started Guide for information on setting up the development environ-

ment.

2.2 Environment Abstraction Layer

The Environment Abstraction Layer (EAL) provides a generic interface that hides the environ-

ment specifics from the applications and libraries. The services provided by the EAL are:

• DPDK loading and launching

• Support for multi-process and multi-thread execution types

• Core affinity/assignment procedures

• System memory allocation/de-allocation

• Atomic/lock operations

• Time reference

• PCI bus access

• Trace and debug functions

• CPU feature identification

• Interrupt handling

• Alarm operations

• Memory management (malloc)

The EAL is fully described in Environment Abstraction Layer.

2.3 Core Components

The core components are a set of libraries that provide all the elements needed for high-

performance packet processing applications.

2.3.1 Ring Manager (librte_ring)

The ring structure provides a lockless multi-producer, multi-consumer FIFO API in a finite size

table. It has some advantages over lockless queues; easier to implement, adapted to bulk

operations and faster. A ring is used by the Memory Pool Manager (librte_mempool) and

may be used as a general communication mechanism between cores and/or execution blocks

connected together on a logical core.

This ring buffer and its usage are fully described in Ring Library .

2.3.2 Memory Pool Manager (librte_mempool)

The Memory Pool Manager is responsible for allocating pools of objects in memory. A pool

is identified by name and uses a ring to store free objects. It provides some other optional

2.2. Environment Abstraction Layer 4

Programmer’s Guide, Release 18.11.0-rc5

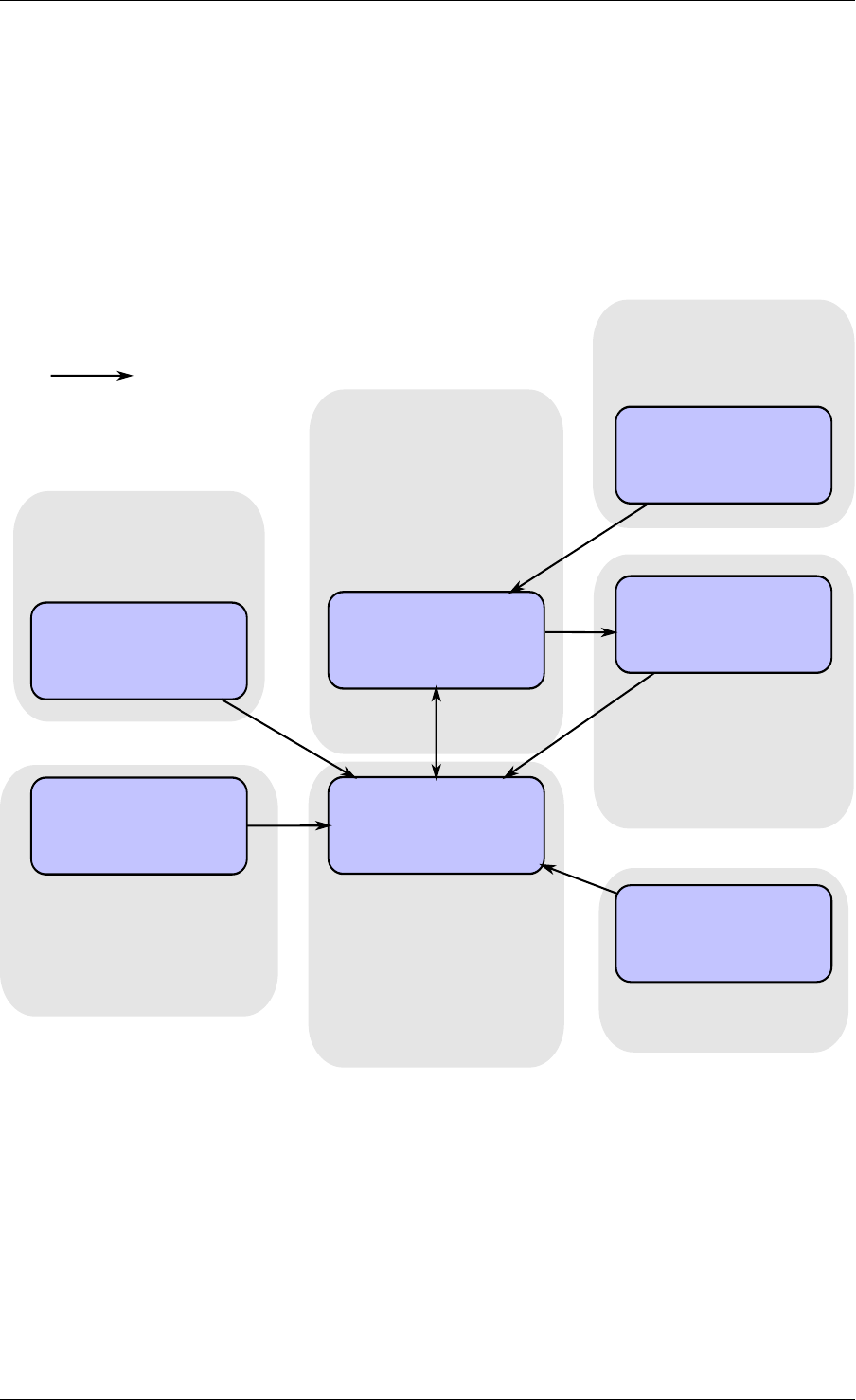

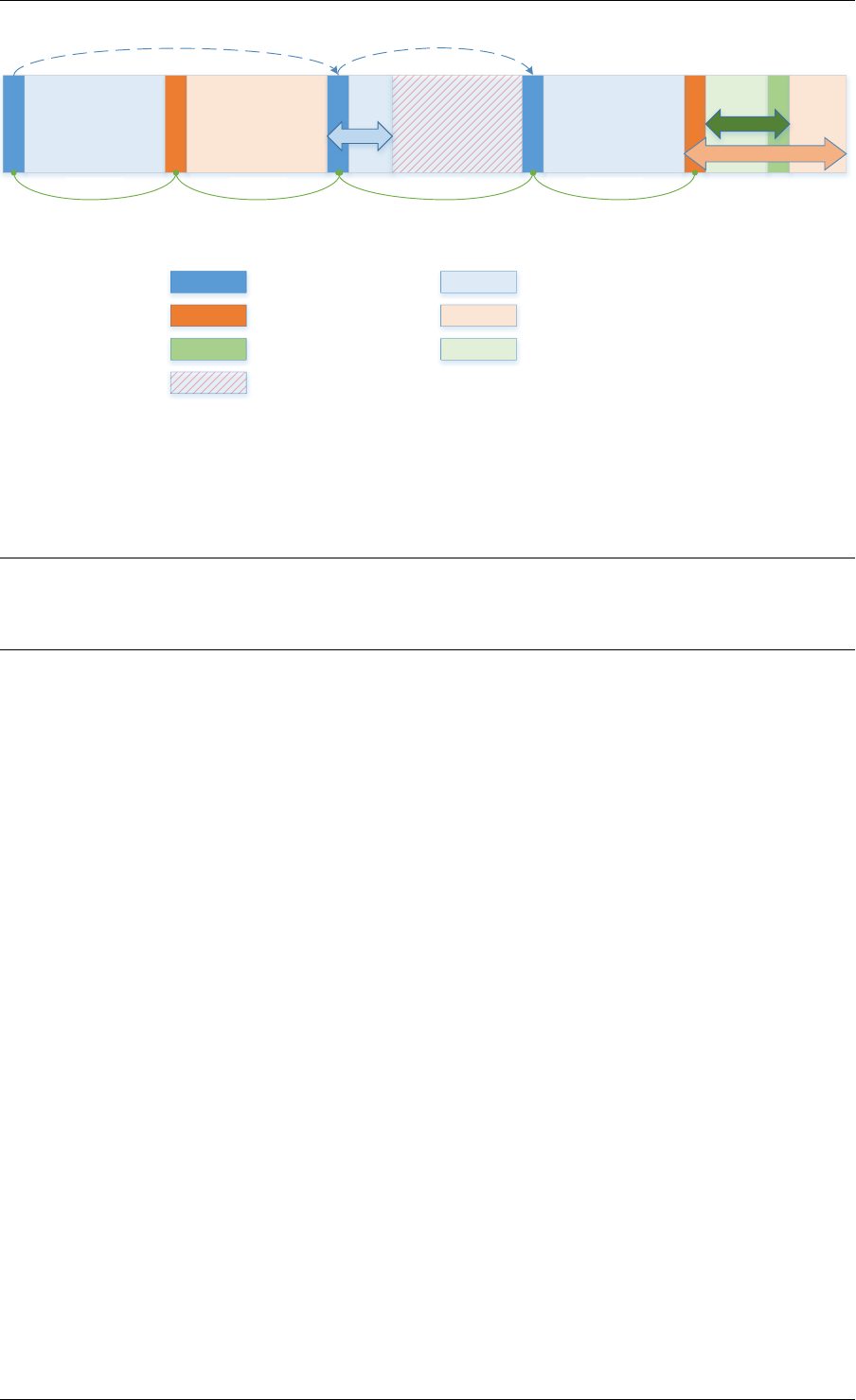

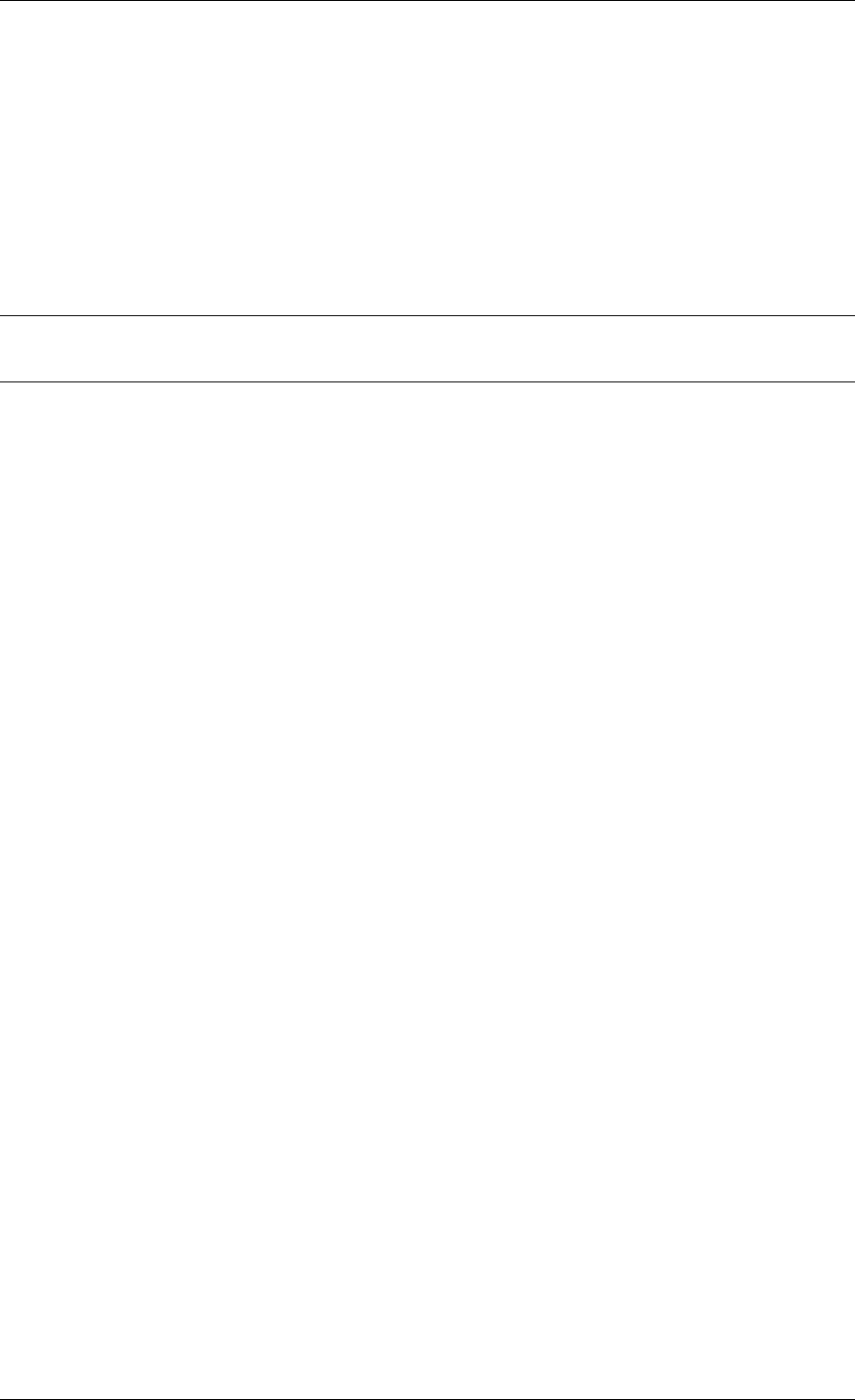

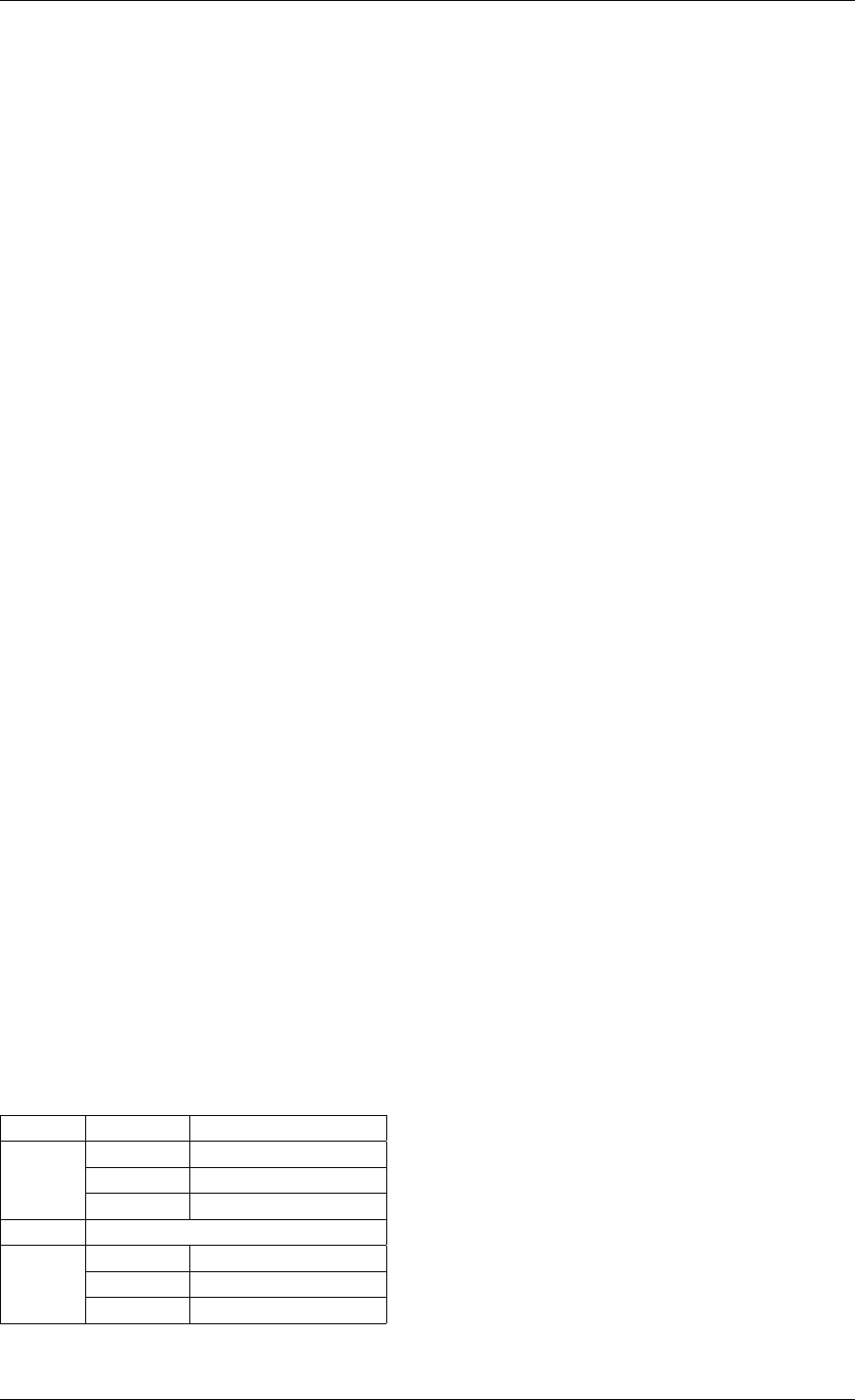

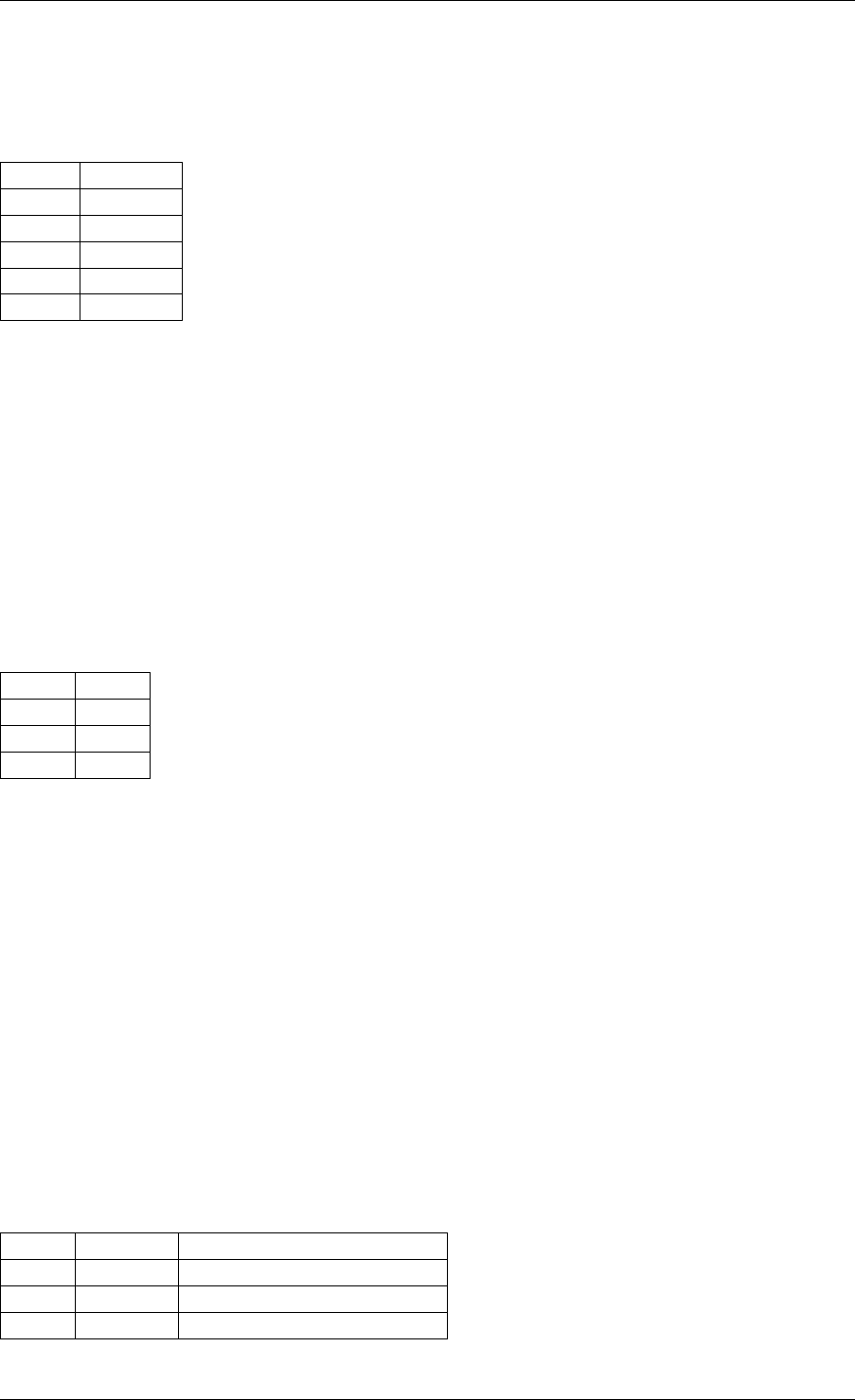

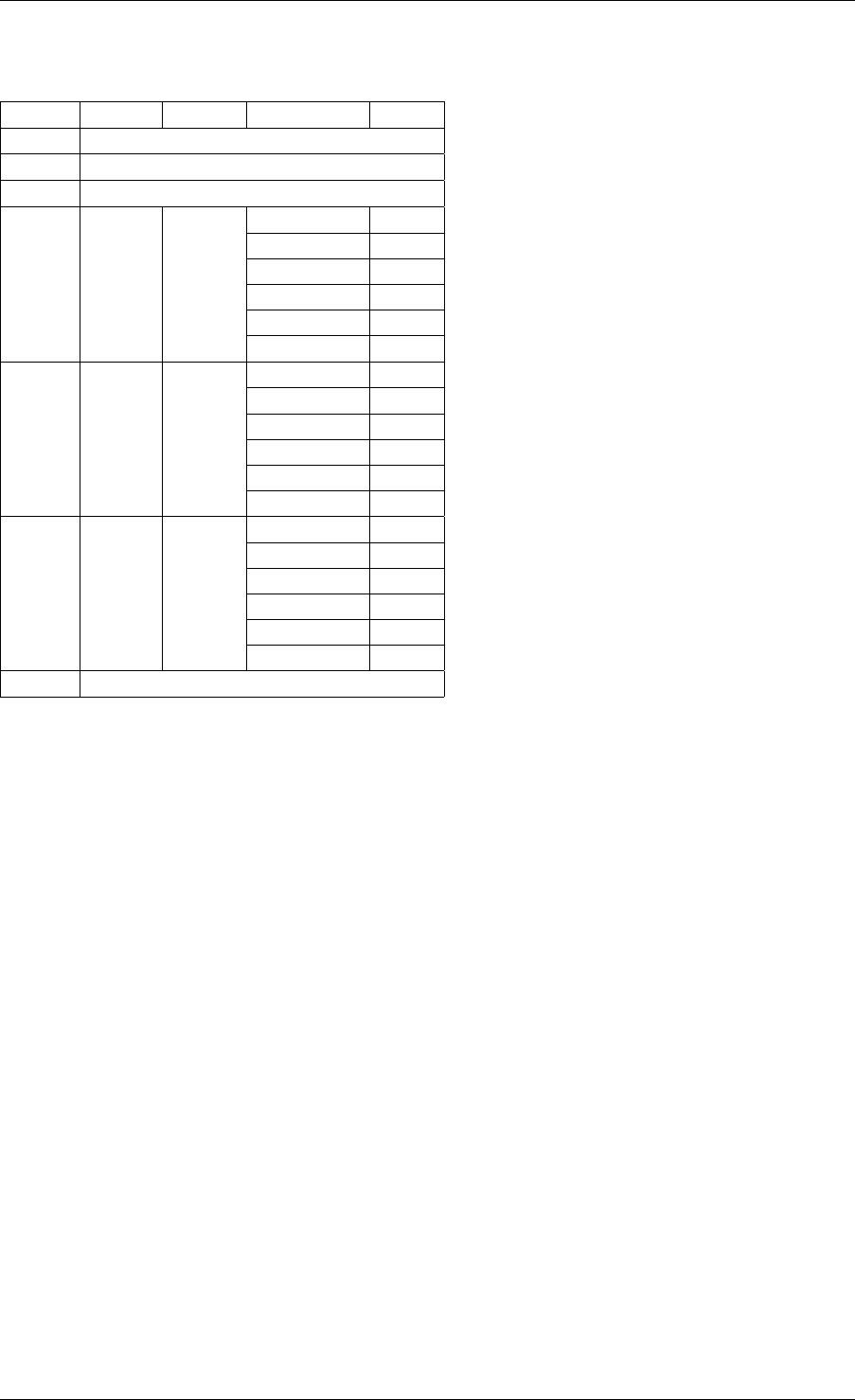

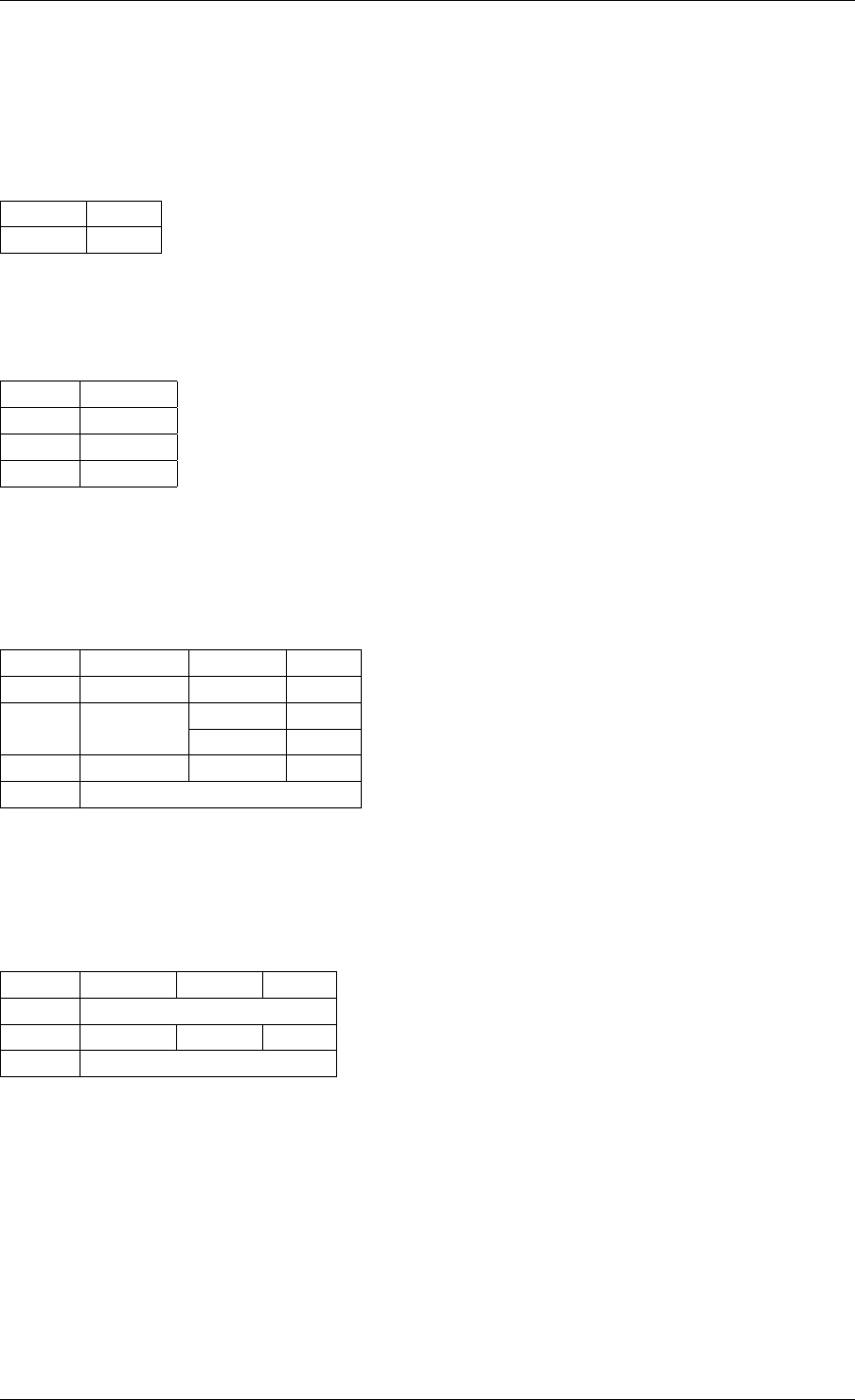

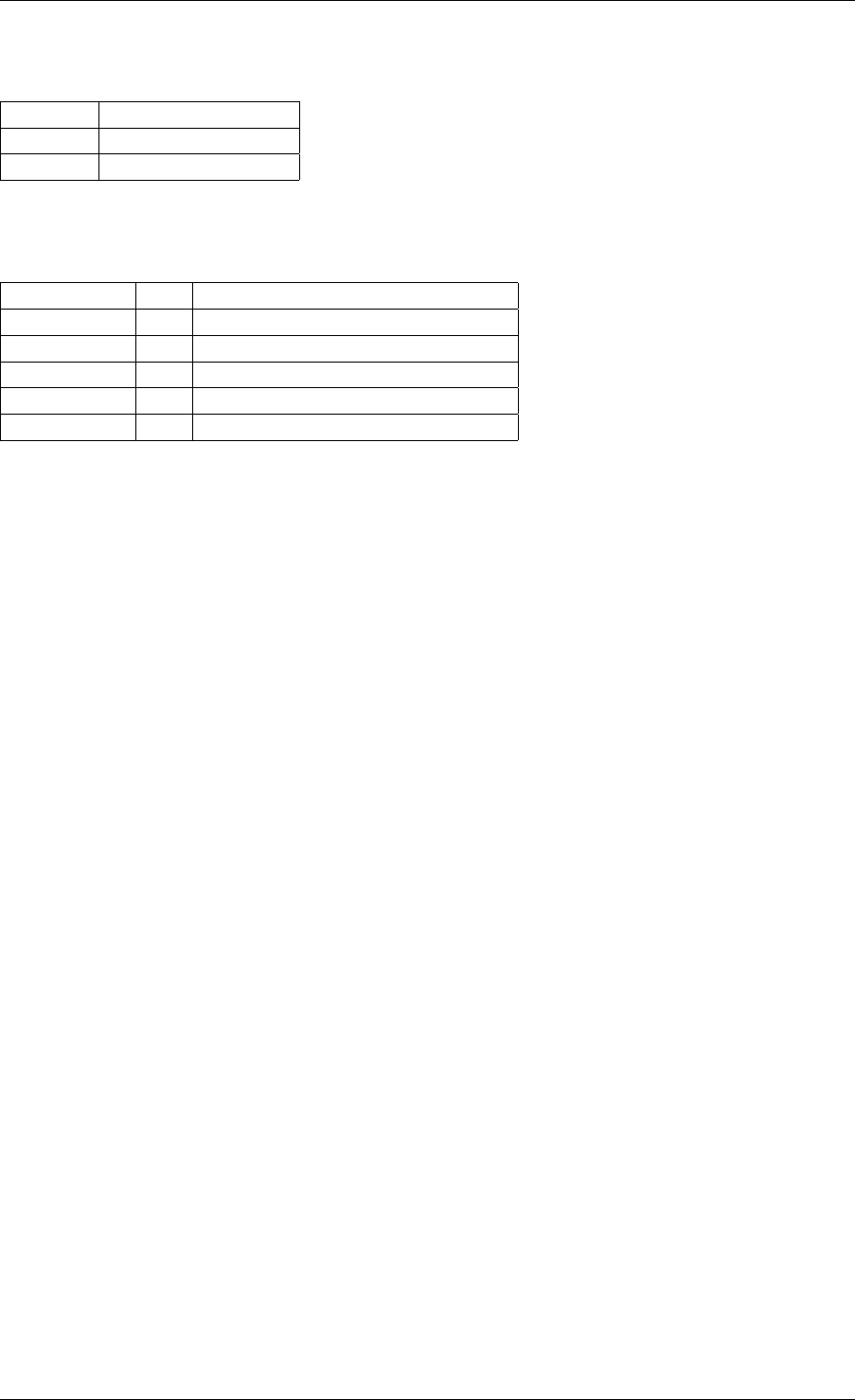

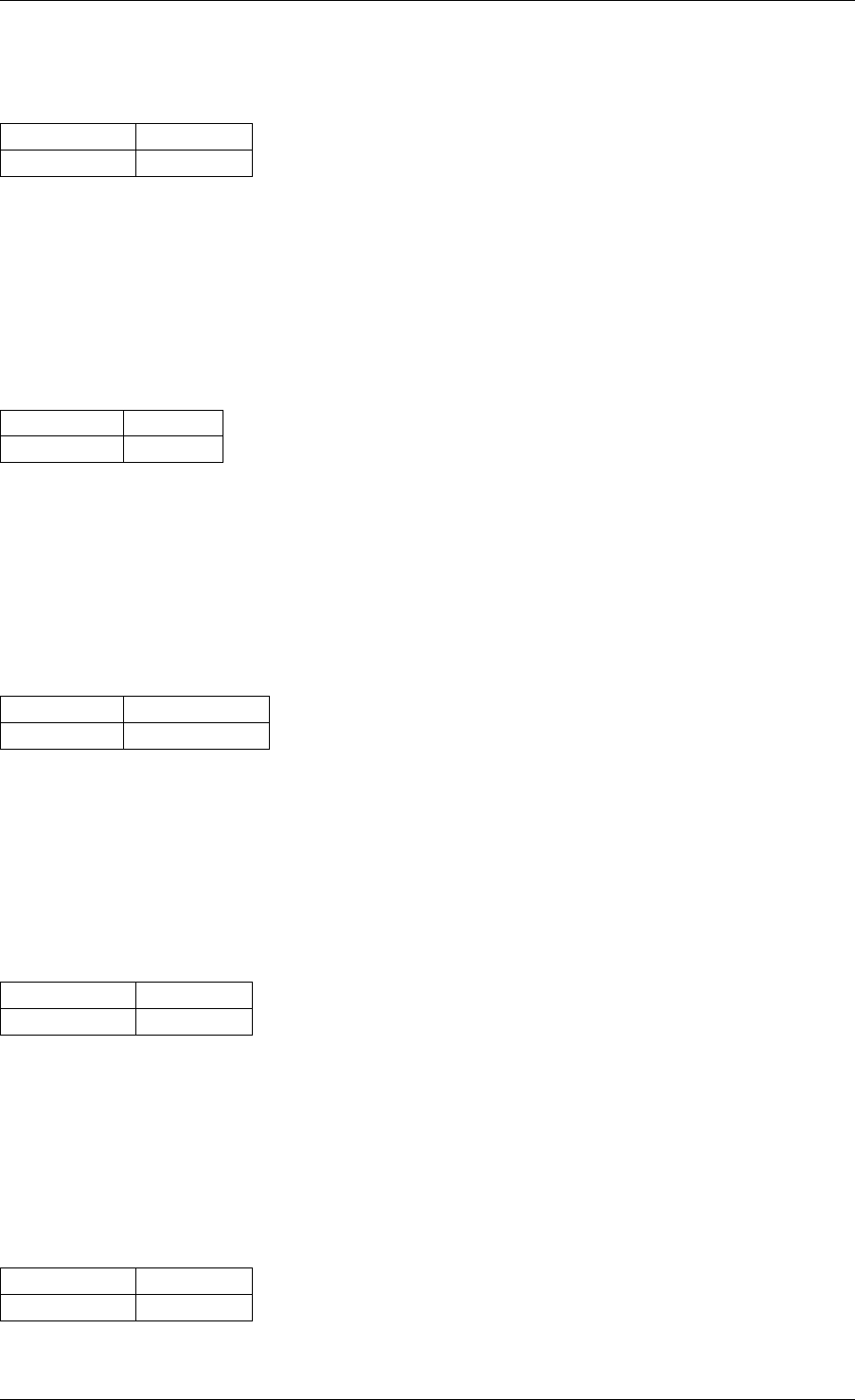

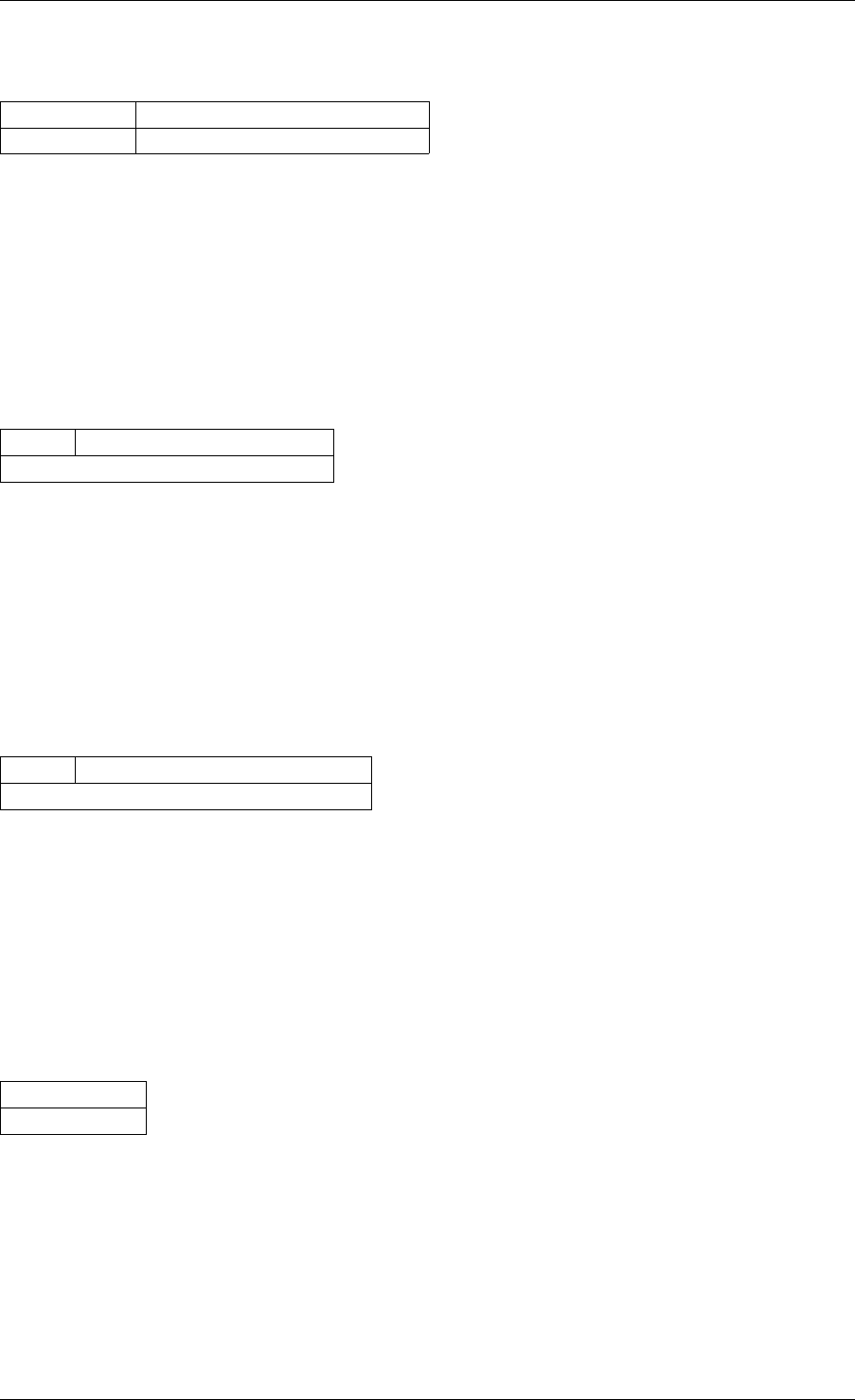

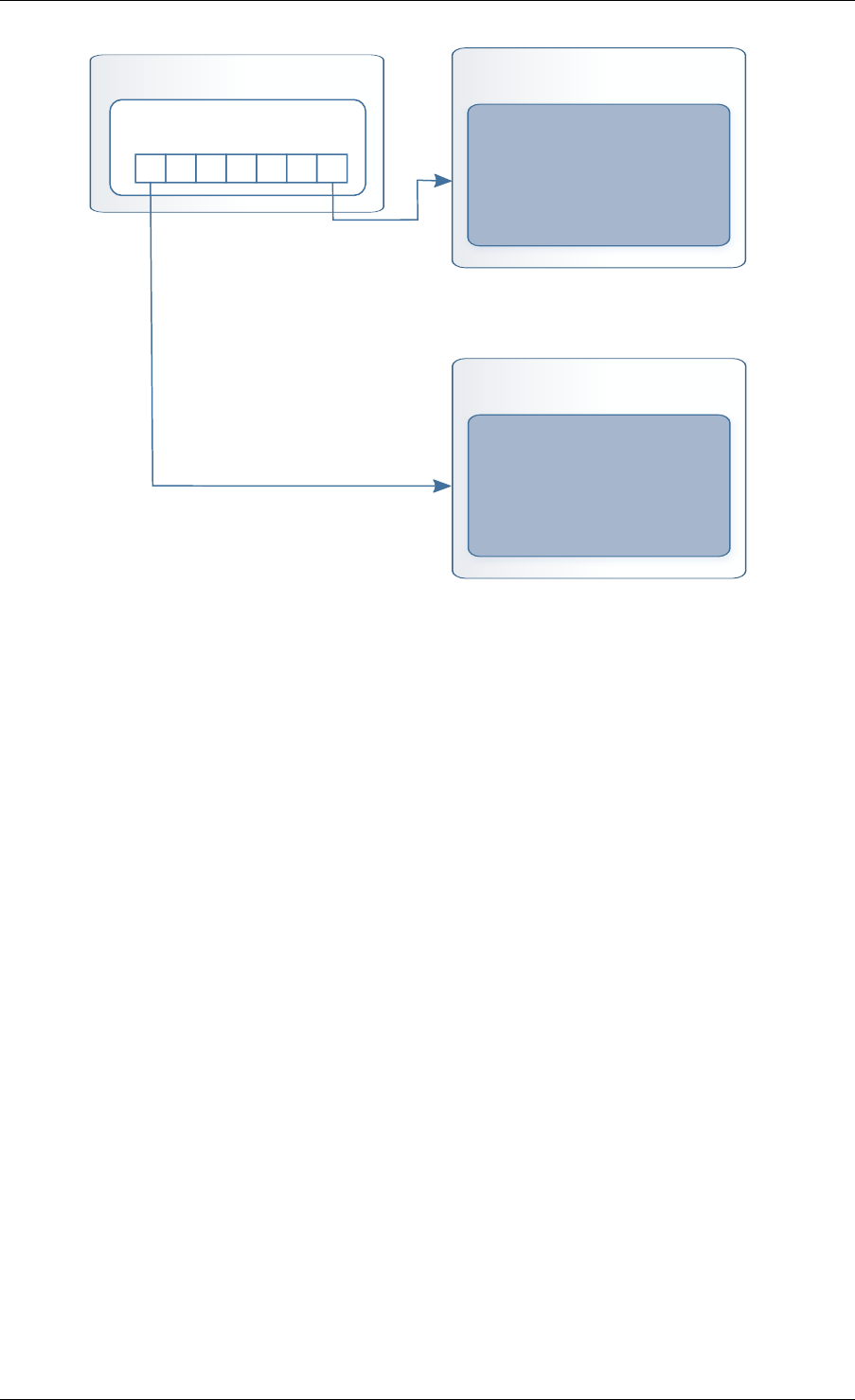

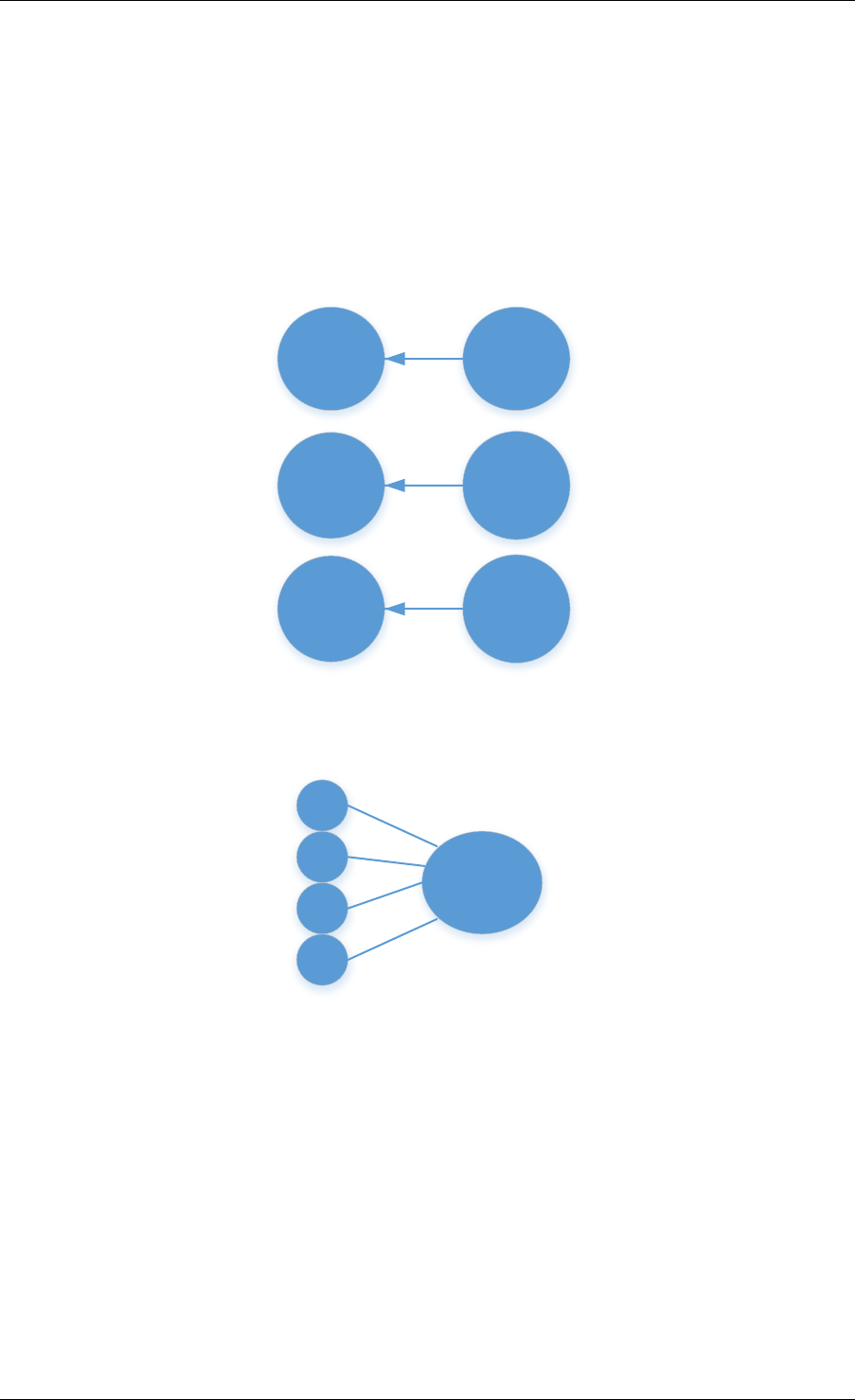

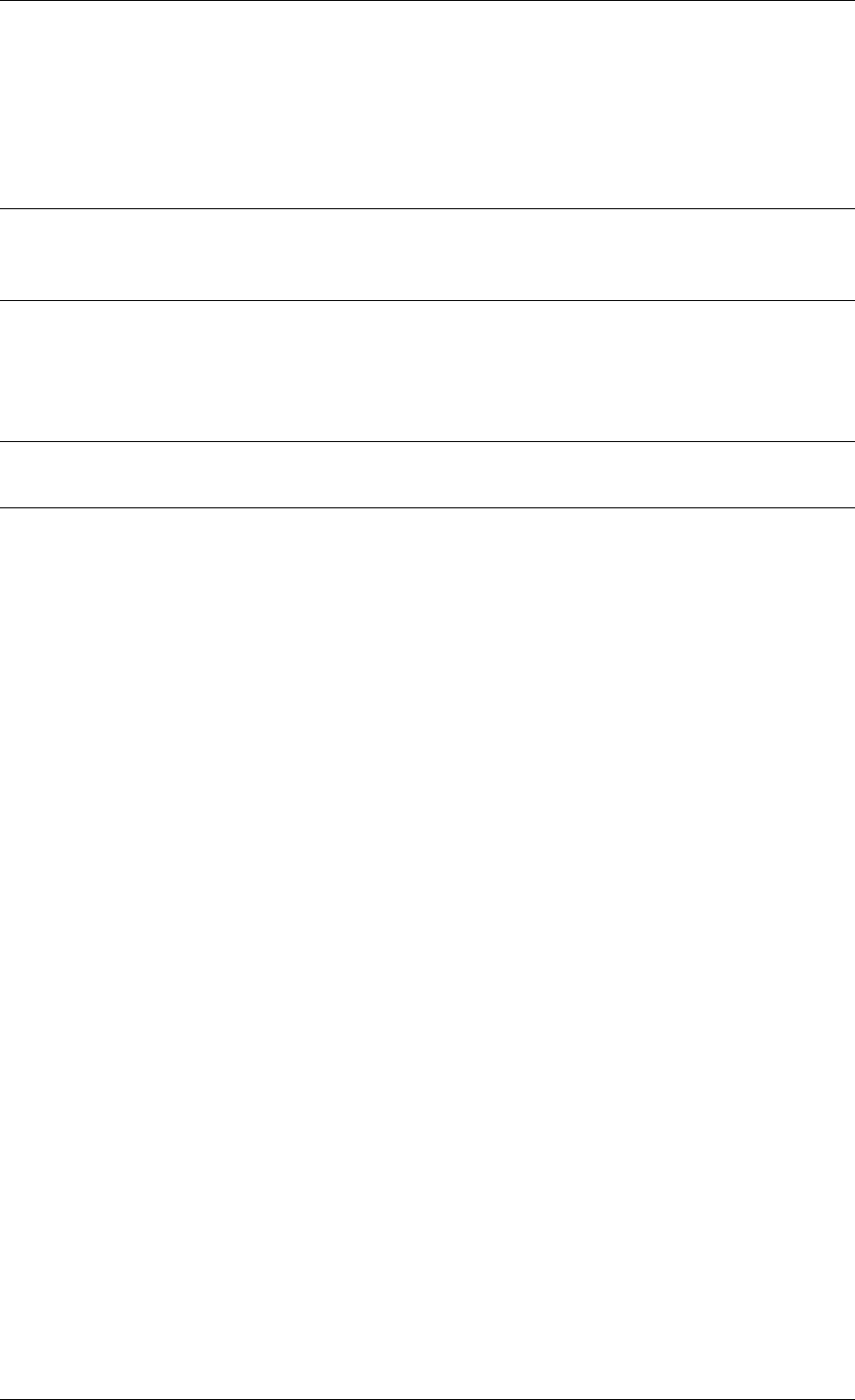

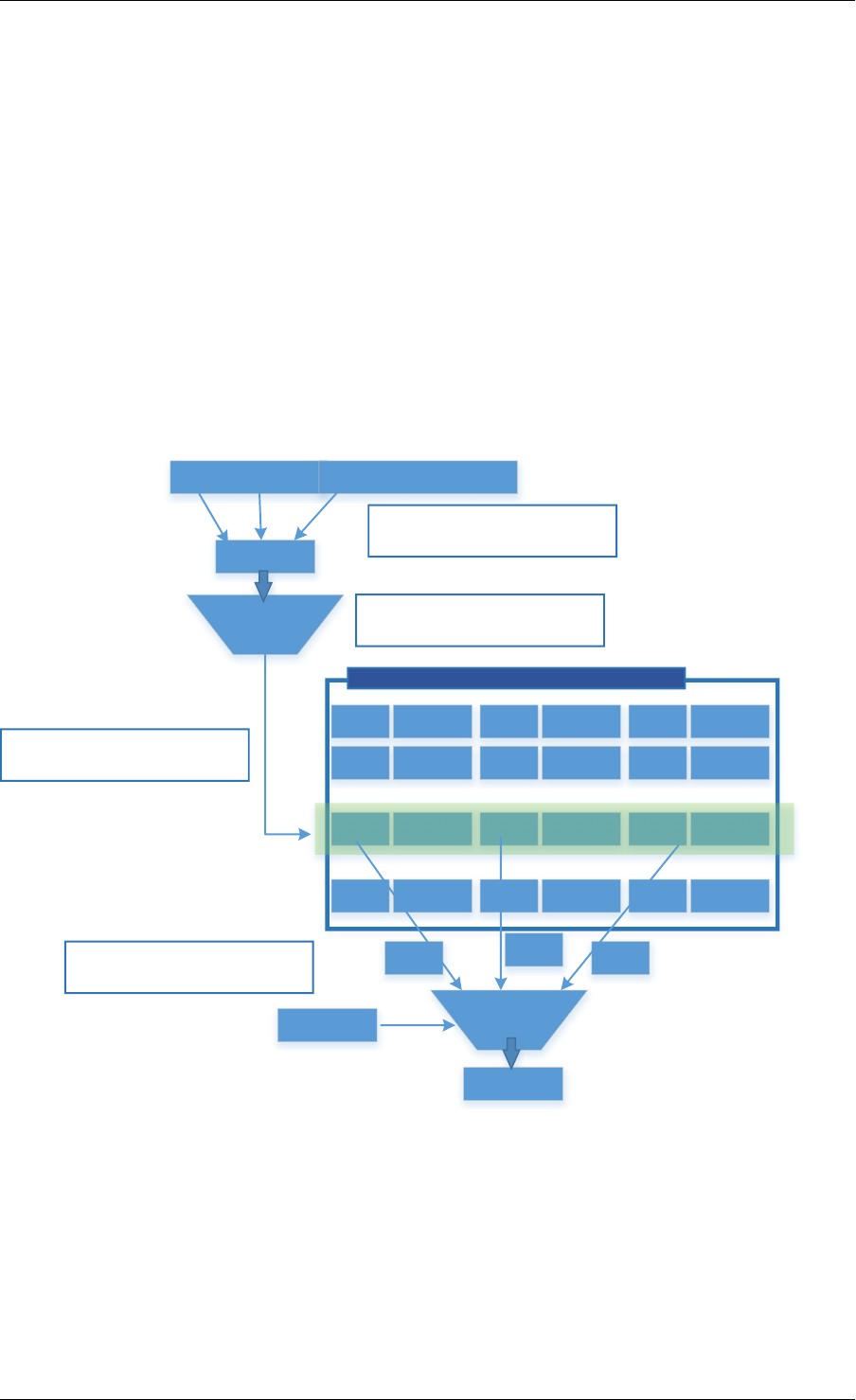

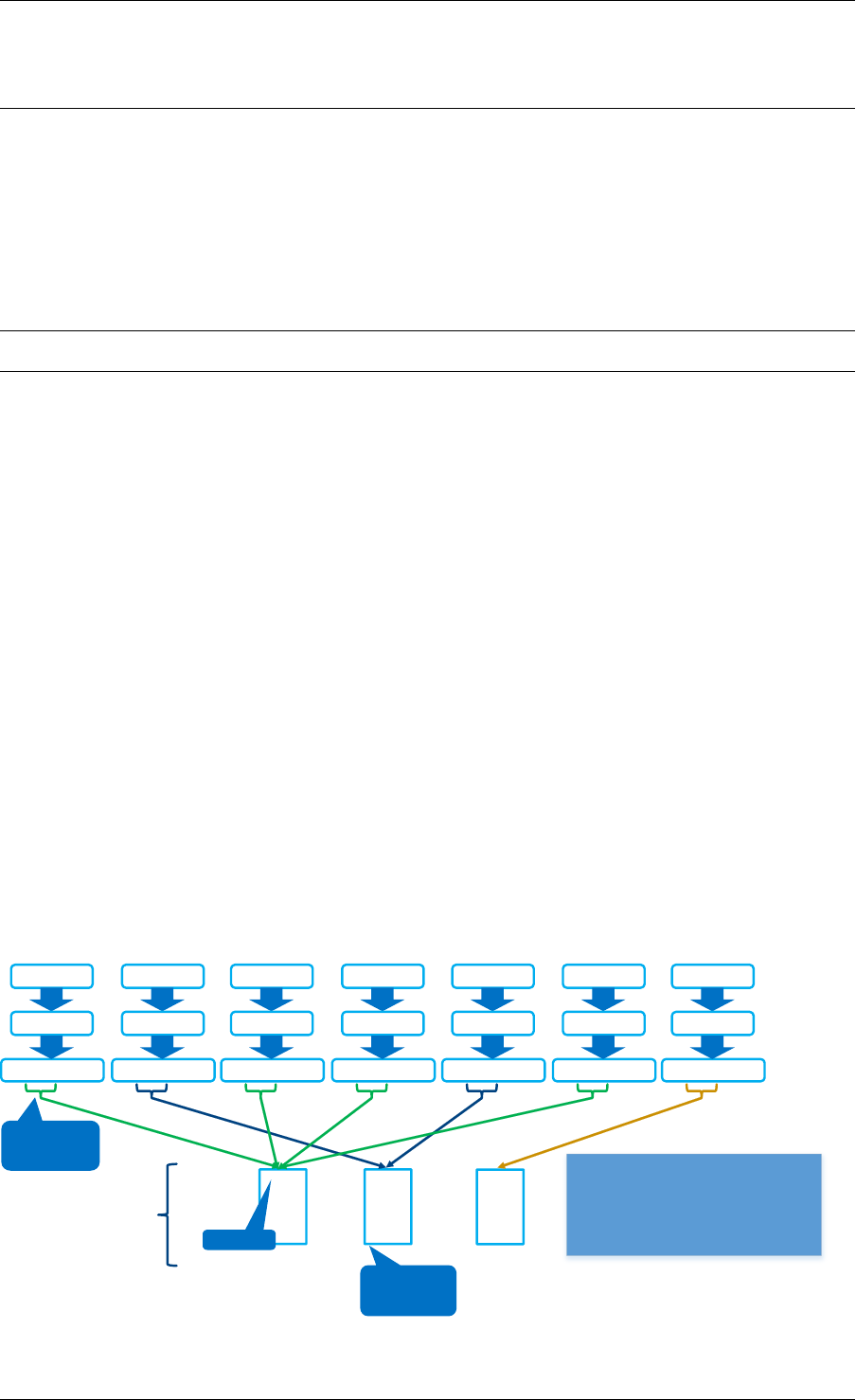

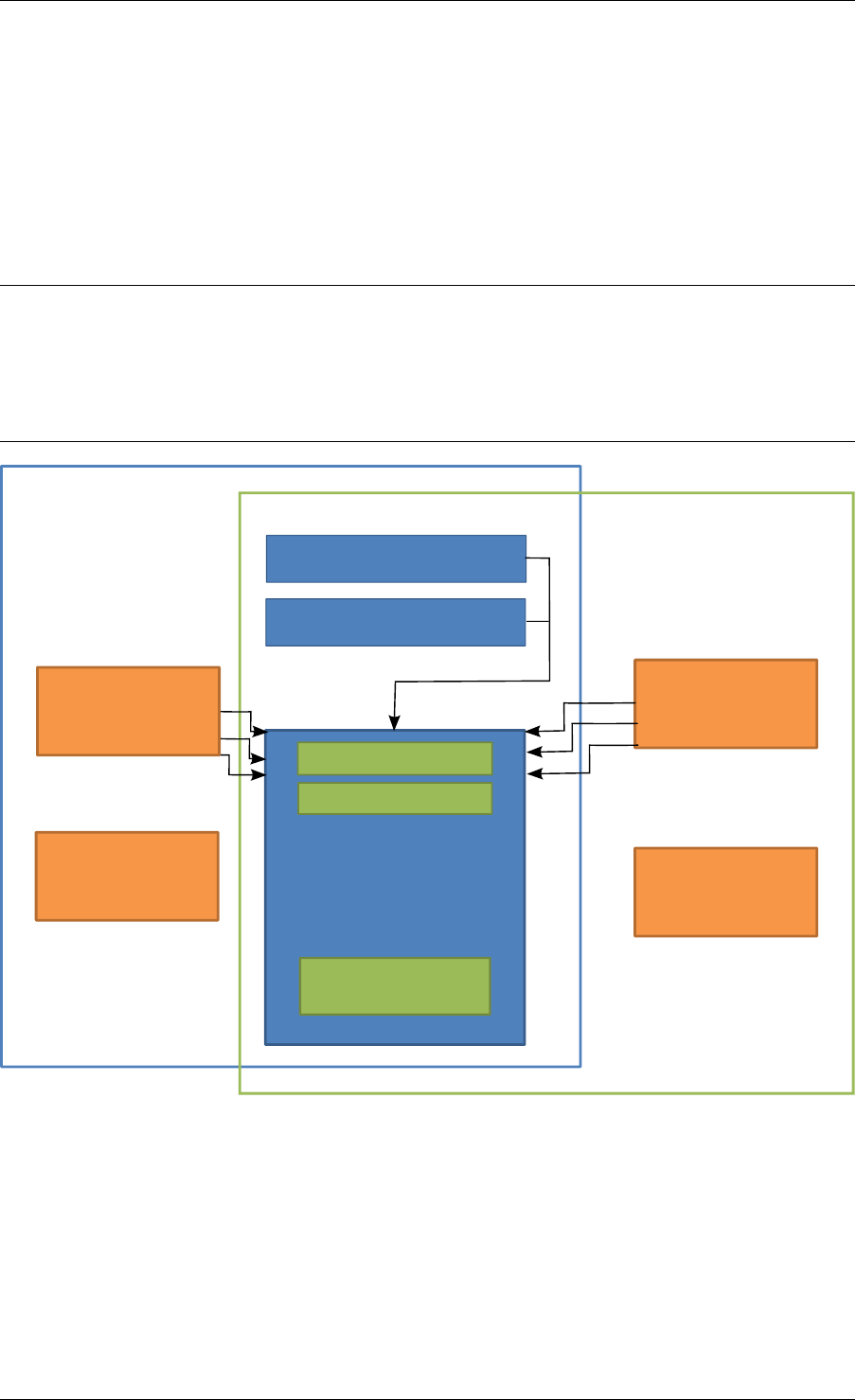

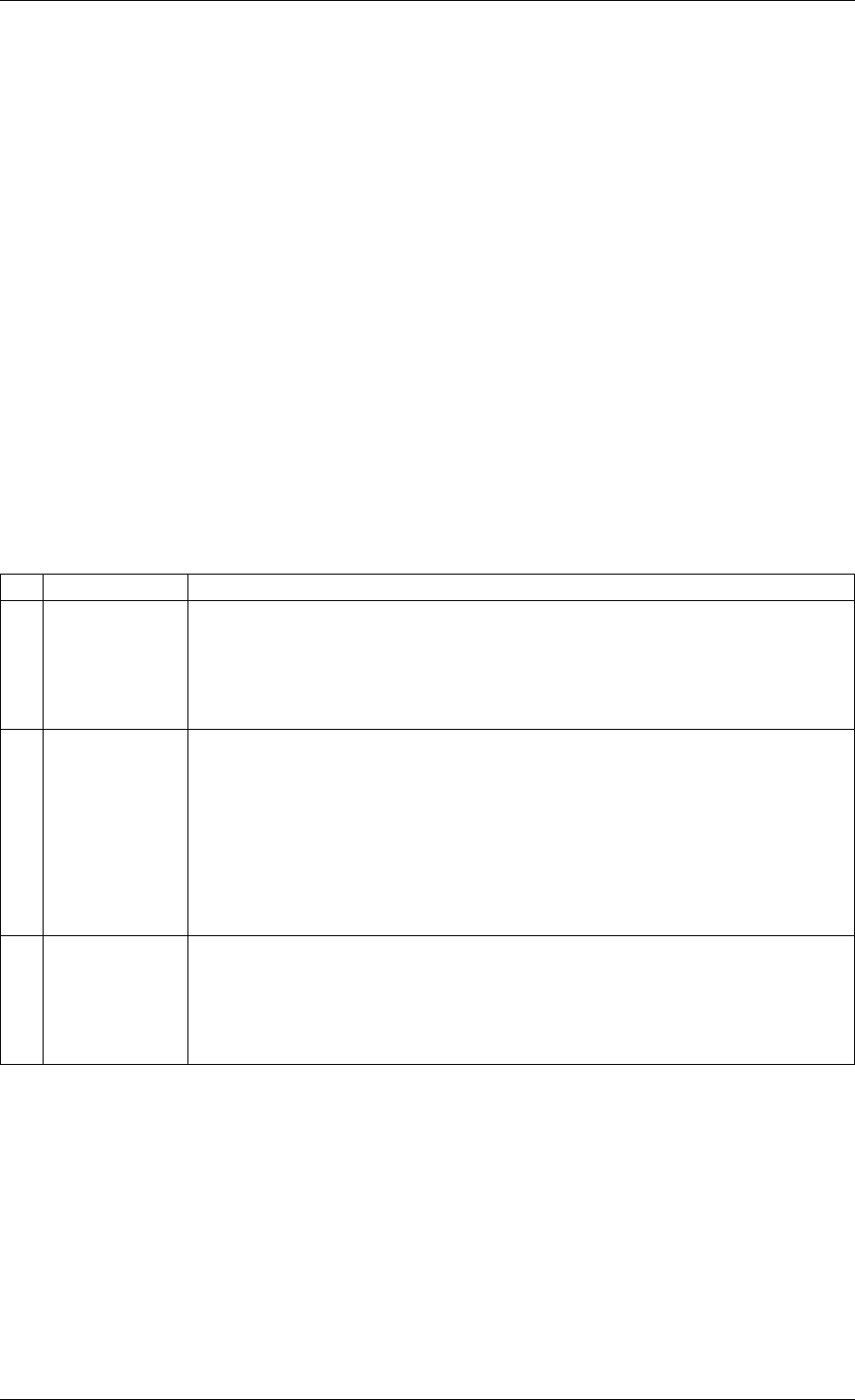

rte_malloc rte_eal + libc

rte_ring

rte_mempool

rte_mbuf

rte_timer

X uses Y

Allocation of named

memory zones using

libc's malloc()

Environment abstraction

layer: RTE loading, memory

allocation, time reference,

PCI access, logging

Timer facilities. Based

on HPET interface that

is provided by EAL.

Handle a pool of objects

using a ring to store

them. Allow bulk

enqueue/dequeue and

per-CPU cache.

Fixed-size lockless

FIFO for storing objects

in a table.

Manipulation of packet

buffers carrying network

data.

rte_debug

Provides debug helpers

XY

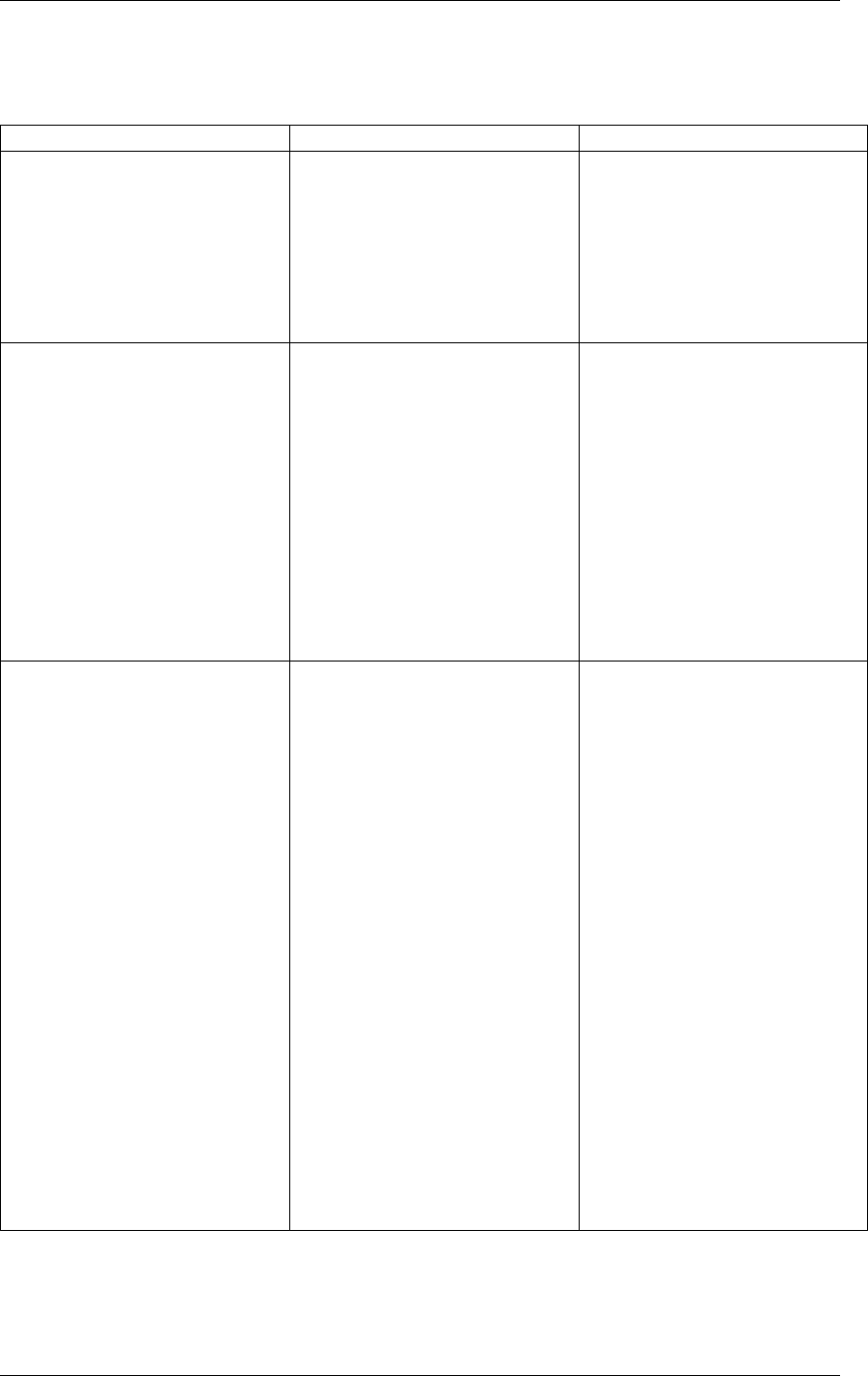

Fig. 2.1: Core Components Architecture

2.3. Core Components 5

Programmer’s Guide, Release 18.11.0-rc5

services, such as a per-core object cache and an alignment helper to ensure that objects are

padded to spread them equally on all RAM channels.

This memory pool allocator is described in Mempool Library.

2.3.3 Network Packet Buffer Management (librte_mbuf)

The mbuf library provides the facility to create and destroy buffers that may be used by the

DPDK application to store message buffers. The message buffers are created at startup time

and stored in a mempool, using the DPDK mempool library.

This library provides an API to allocate/free mbufs, manipulate packet buffers which are used

to carry network packets.

Network Packet Buffer Management is described in Mbuf Library .

2.3.4 Timer Manager (librte_timer)

This library provides a timer service to DPDK execution units, providing the ability to execute

a function asynchronously. It can be periodic function calls, or just a one-shot call. It uses

the timer interface provided by the Environment Abstraction Layer (EAL) to get a precise time

reference and can be initiated on a per-core basis as required.

The library documentation is available in Timer Library .

2.4 Ethernet* Poll Mode Driver Architecture

The DPDK includes Poll Mode Drivers (PMDs) for 1 GbE, 10 GbE and 40GbE, and para virtu-

alized virtio Ethernet controllers which are designed to work without asynchronous, interrupt-

based signaling mechanisms.

See Poll Mode Driver.

2.5 Packet Forwarding Algorithm Support

The DPDK includes Hash (librte_hash) and Longest Prefix Match (LPM,librte_lpm) libraries to

support the corresponding packet forwarding algorithms.

See Hash Library and LPM Library for more information.

2.6 librte_net

The librte_net library is a collection of IP protocol definitions and convenience macros. It is

based on code from the FreeBSD* IP stack and contains protocol numbers (for use in IP

headers), IP-related macros, IPv4/IPv6 header structures and TCP, UDP and SCTP header

structures.

2.4. Ethernet* Poll Mode Driver Architecture 6

CHAPTER

THREE

ENVIRONMENT ABSTRACTION LAYER

The Environment Abstraction Layer (EAL) is responsible for gaining access to low-level re-

sources such as hardware and memory space. It provides a generic interface that hides the

environment specifics from the applications and libraries. It is the responsibility of the initializa-

tion routine to decide how to allocate these resources (that is, memory space, devices, timers,

consoles, and so on).

Typical services expected from the EAL are:

• DPDK Loading and Launching: The DPDK and its application are linked as a single

application and must be loaded by some means.

• Core Affinity/Assignment Procedures: The EAL provides mechanisms for assigning exe-

cution units to specific cores as well as creating execution instances.

• System Memory Reservation: The EAL facilitates the reservation of different memory

zones, for example, physical memory areas for device interactions.

• Trace and Debug Functions: Logs, dump_stack, panic and so on.

• Utility Functions: Spinlocks and atomic counters that are not provided in libc.

• CPU Feature Identification: Determine at runtime if a particular feature, for example,

Intel® AVX is supported. Determine if the current CPU supports the feature set that the

binary was compiled for.

• Interrupt Handling: Interfaces to register/unregister callbacks to specific interrupt

sources.

• Alarm Functions: Interfaces to set/remove callbacks to be run at a specific time.

3.1 EAL in a Linux-userland Execution Environment

In a Linux user space environment, the DPDK application runs as a user-space application

using the pthread library.

The EAL performs physical memory allocation using mmap() in hugetlbfs (using huge page

sizes to increase performance). This memory is exposed to DPDK service layers such as the

Mempool Library .

At this point, the DPDK services layer will be initialized, then through pthread setaffinity calls,

each execution unit will be assigned to a specific logical core to run as a user-level thread.

The time reference is provided by the CPU Time-Stamp Counter (TSC) or by the HPET kernel

API through a mmap() call.

7

Programmer’s Guide, Release 18.11.0-rc5

3.1.1 Initialization and Core Launching

Part of the initialization is done by the start function of glibc. A check is also performed at

initialization time to ensure that the micro architecture type chosen in the config file is supported

by the CPU. Then, the main() function is called. The core initialization and launch is done

in rte_eal_init() (see the API documentation). It consist of calls to the pthread library (more

specifically, pthread_self(), pthread_create(), and pthread_setaffinity_np()).

Note: Initialization of objects, such as memory zones, rings, memory pools, lpm tables and

hash tables, should be done as part of the overall application initialization on the master lcore.

The creation and initialization functions for these objects are not multi-thread safe. However,

once initialized, the objects themselves can safely be used in multiple threads simultaneously.

3.1.2 Shutdown and Cleanup

During the initialization of EAL resources such as hugepage backed memory can be allocated

by core components. The memory allocated during rte_eal_init() can be released by

calling the rte_eal_cleanup() function. Refer to the API documentation for details.

3.1.3 Multi-process Support

The Linuxapp EAL allows a multi-process as well as a multi-threaded (pthread) deployment

model. See chapter Multi-process Support for more details.

3.1.4 Memory Mapping Discovery and Memory Reservation

The allocation of large contiguous physical memory is done using the hugetlbfs kernel filesys-

tem. The EAL provides an API to reserve named memory zones in this contiguous memory.

The physical address of the reserved memory for that memory zone is also returned to the

user by the memory zone reservation API.

There are two modes in which DPDK memory subsystem can operate: dynamic mode, and

legacy mode. Both modes are explained below.

Note: Memory reservations done using the APIs provided by rte_malloc are also backed by

pages from the hugetlbfs filesystem.

• Dynamic memory mode

Currently, this mode is only supported on Linux.

In this mode, usage of hugepages by DPDK application will grow and shrink based on applica-

tion’s requests. Any memory allocation through rte_malloc(),rte_memzone_reserve()

or other methods, can potentially result in more hugepages being reserved from the system.

Similarly, any memory deallocation can potentially result in hugepages being released back to

the system.

Memory allocated in this mode is not guaranteed to be IOVA-contiguous. If large chunks of

IOVA-contiguous are required (with “large” defined as “more than one page”), it is recom-

mended to either use VFIO driver for all physical devices (so that IOVA and VA addresses can

be the same, thereby bypassing physical addresses entirely), or use legacy memory mode.

3.1. EAL in a Linux-userland Execution Environment 8

Programmer’s Guide, Release 18.11.0-rc5

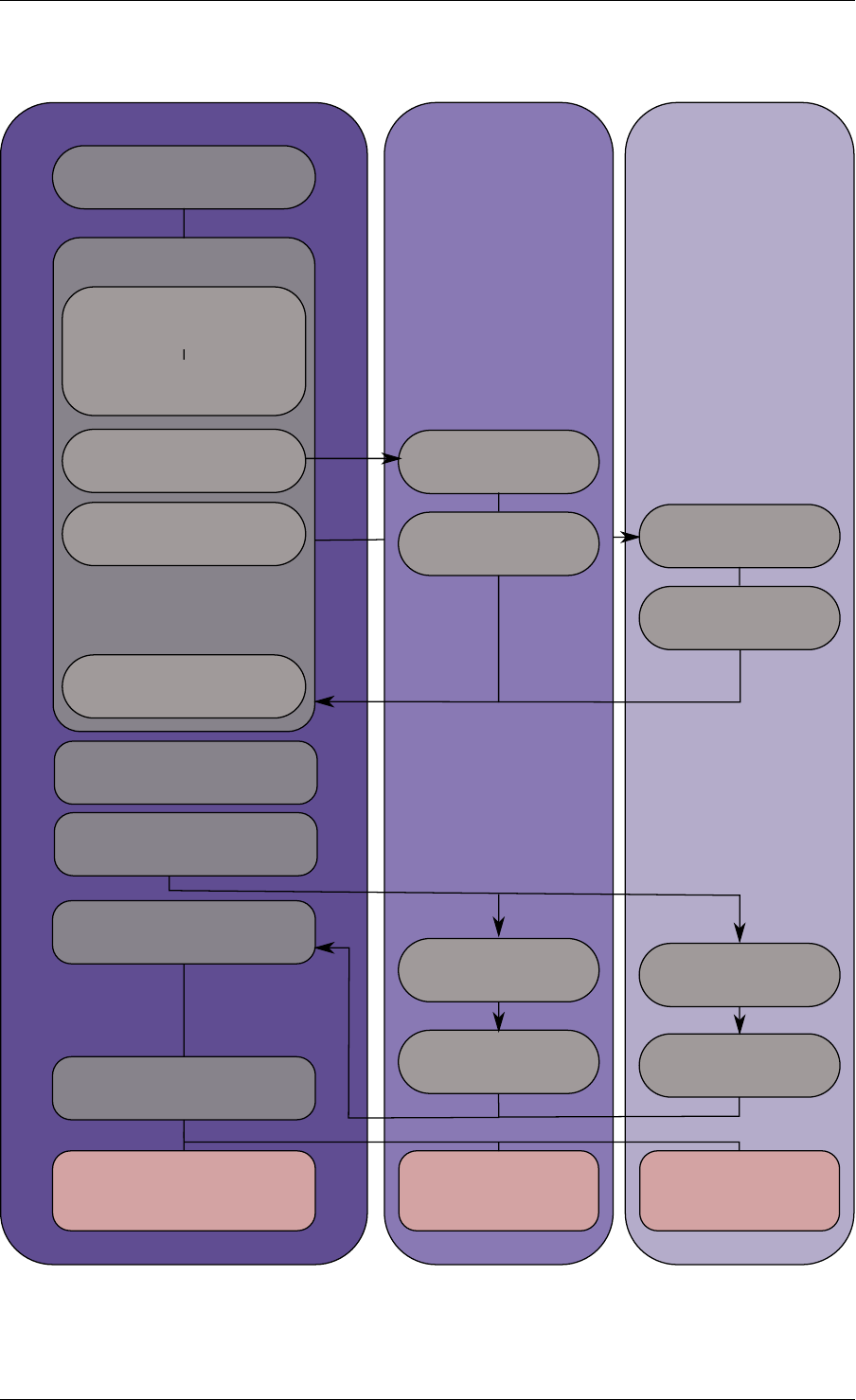

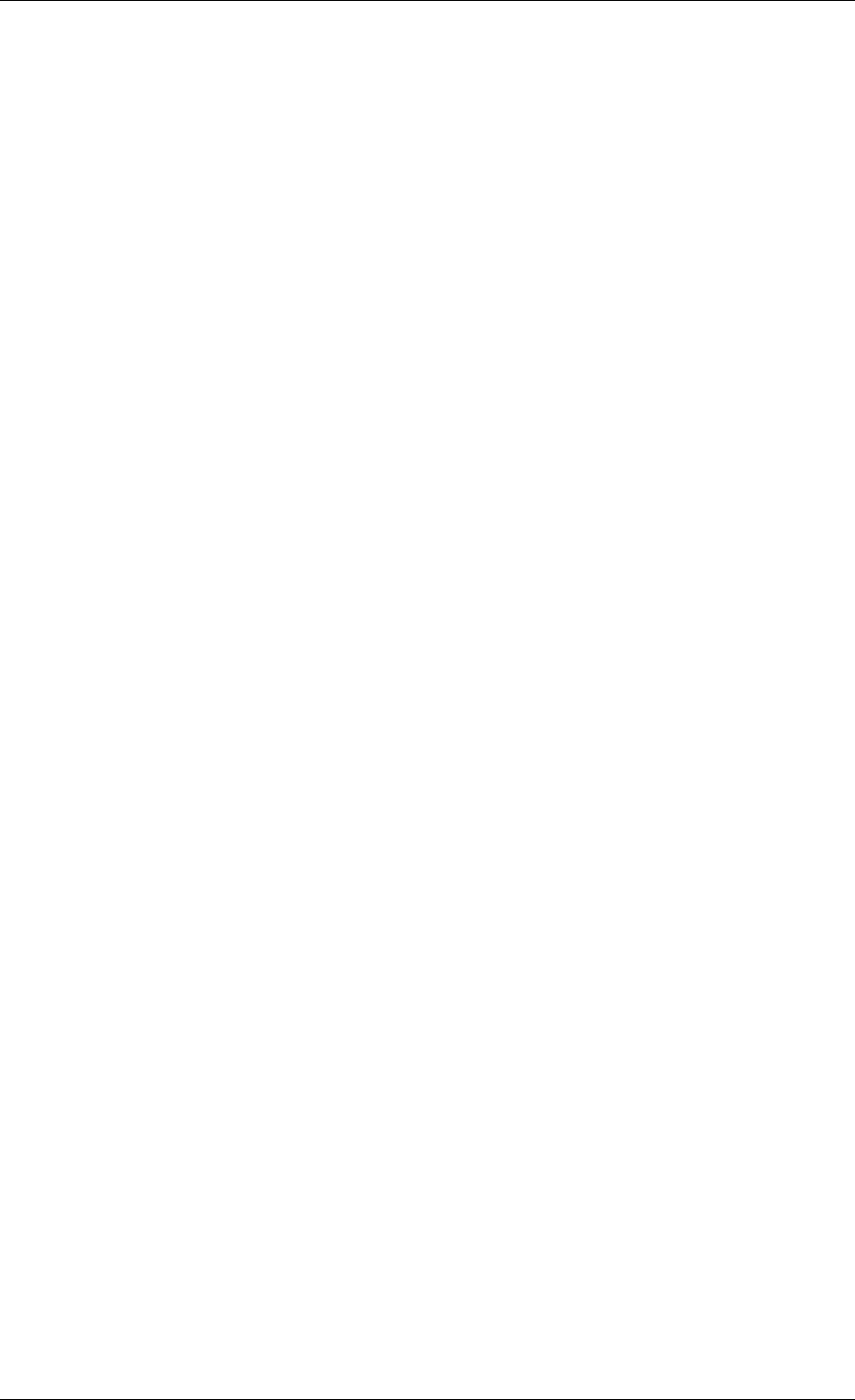

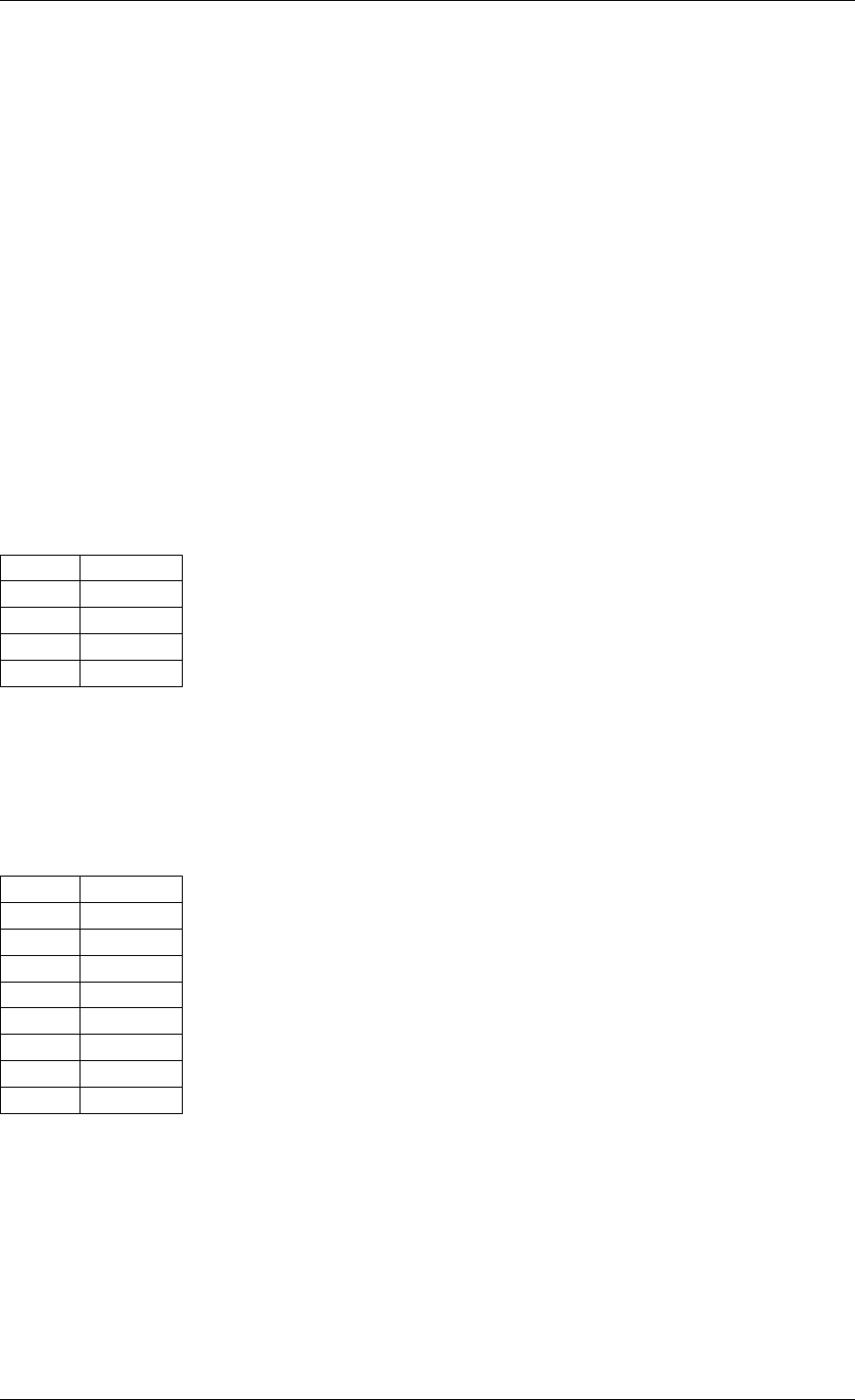

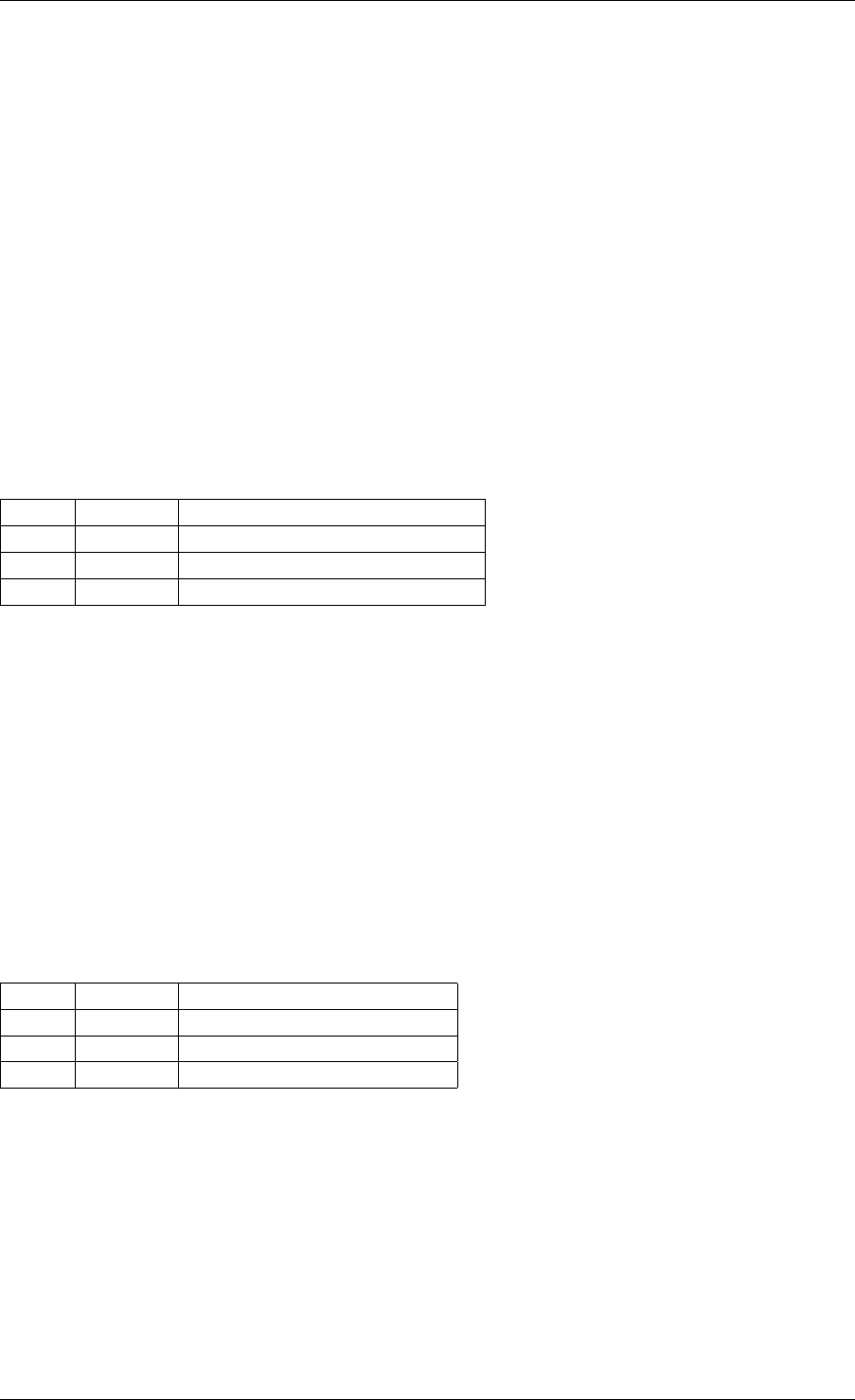

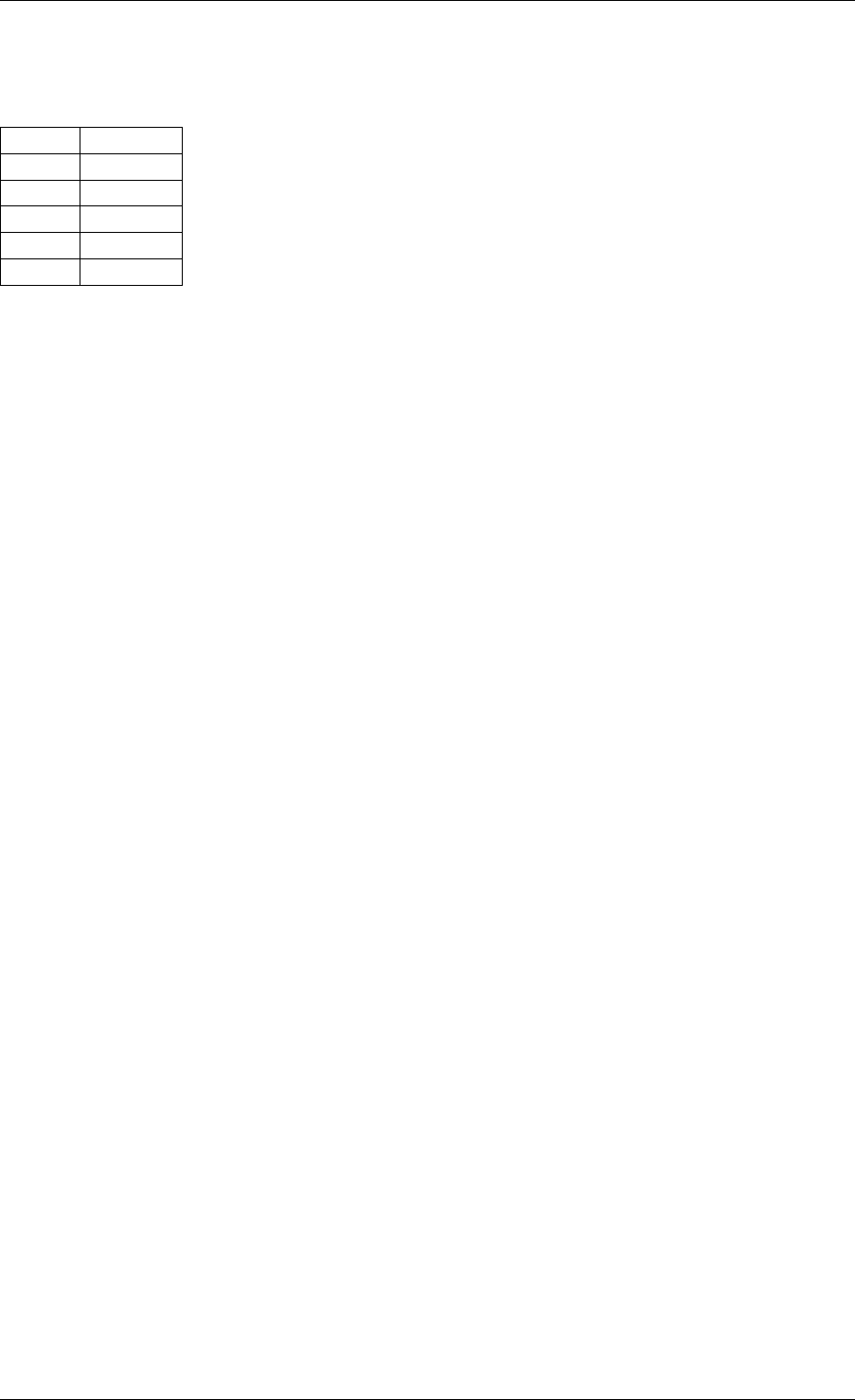

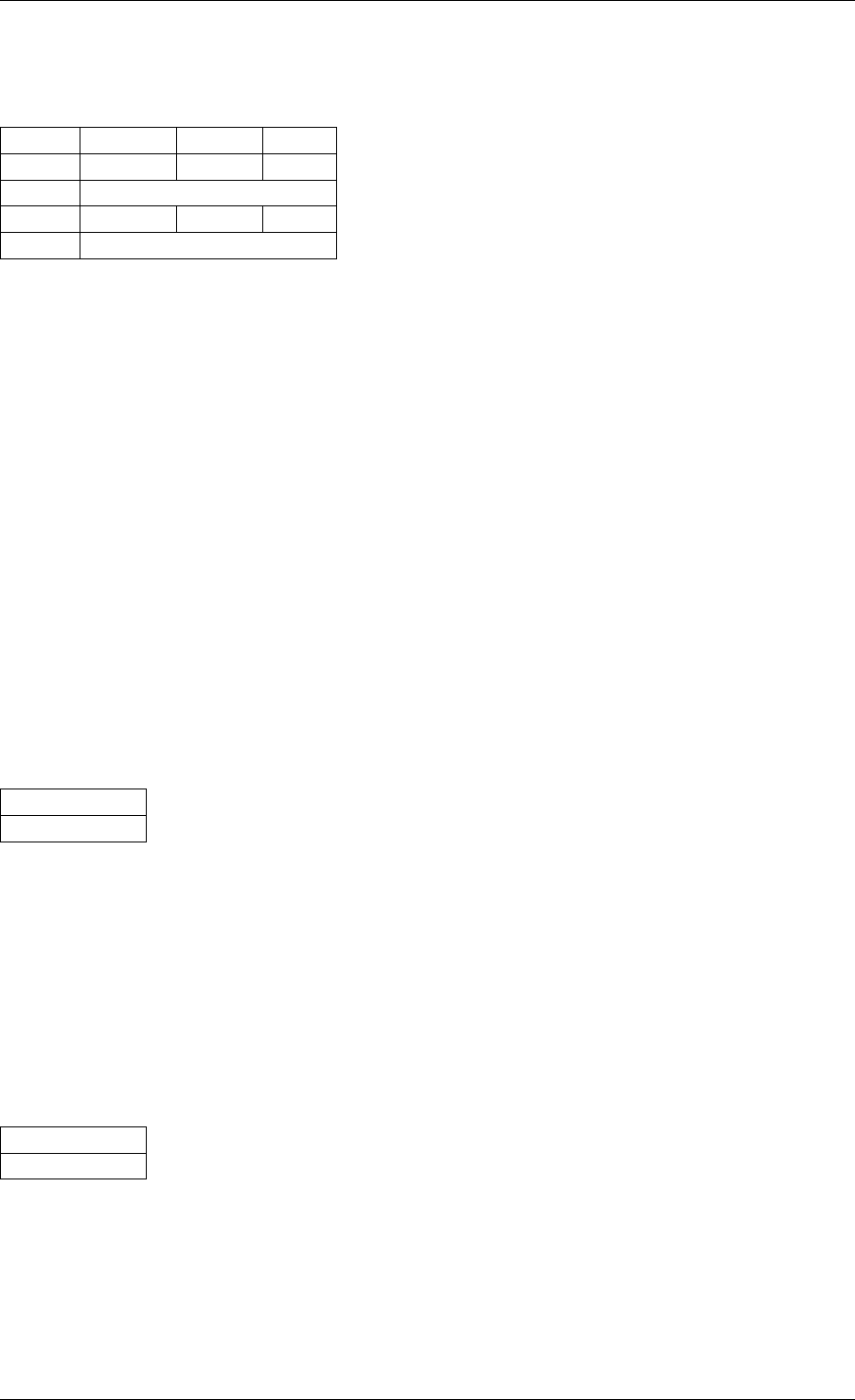

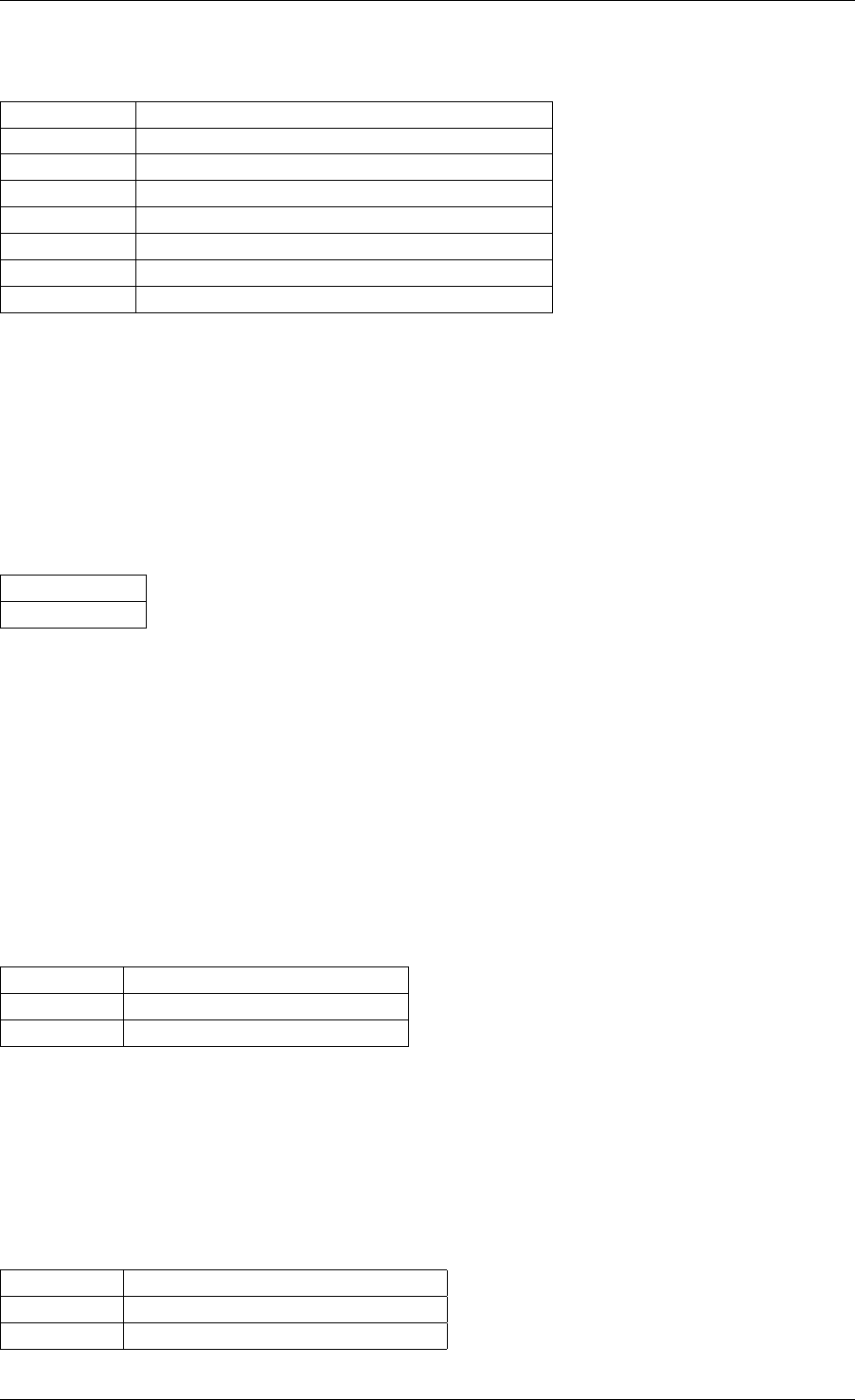

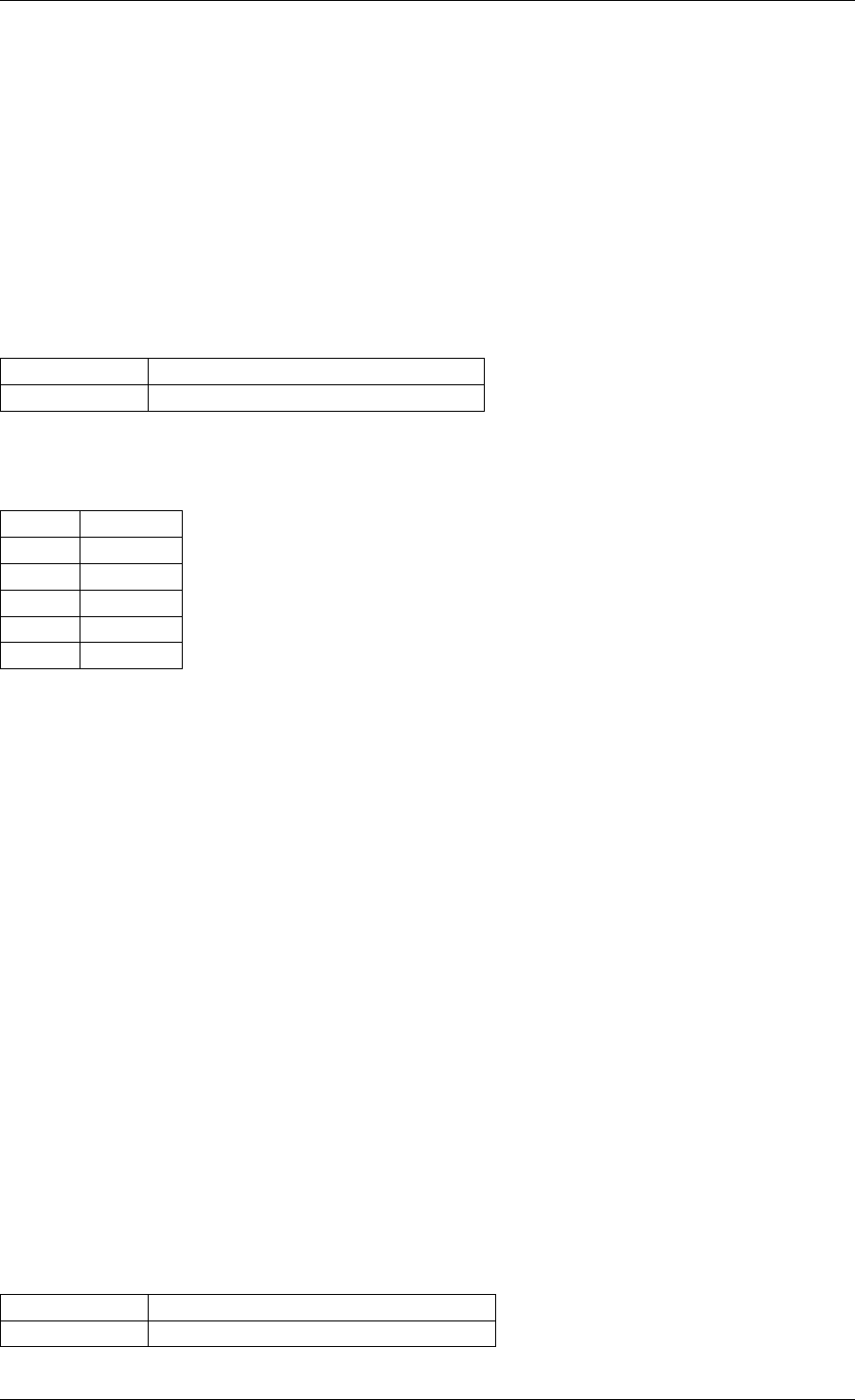

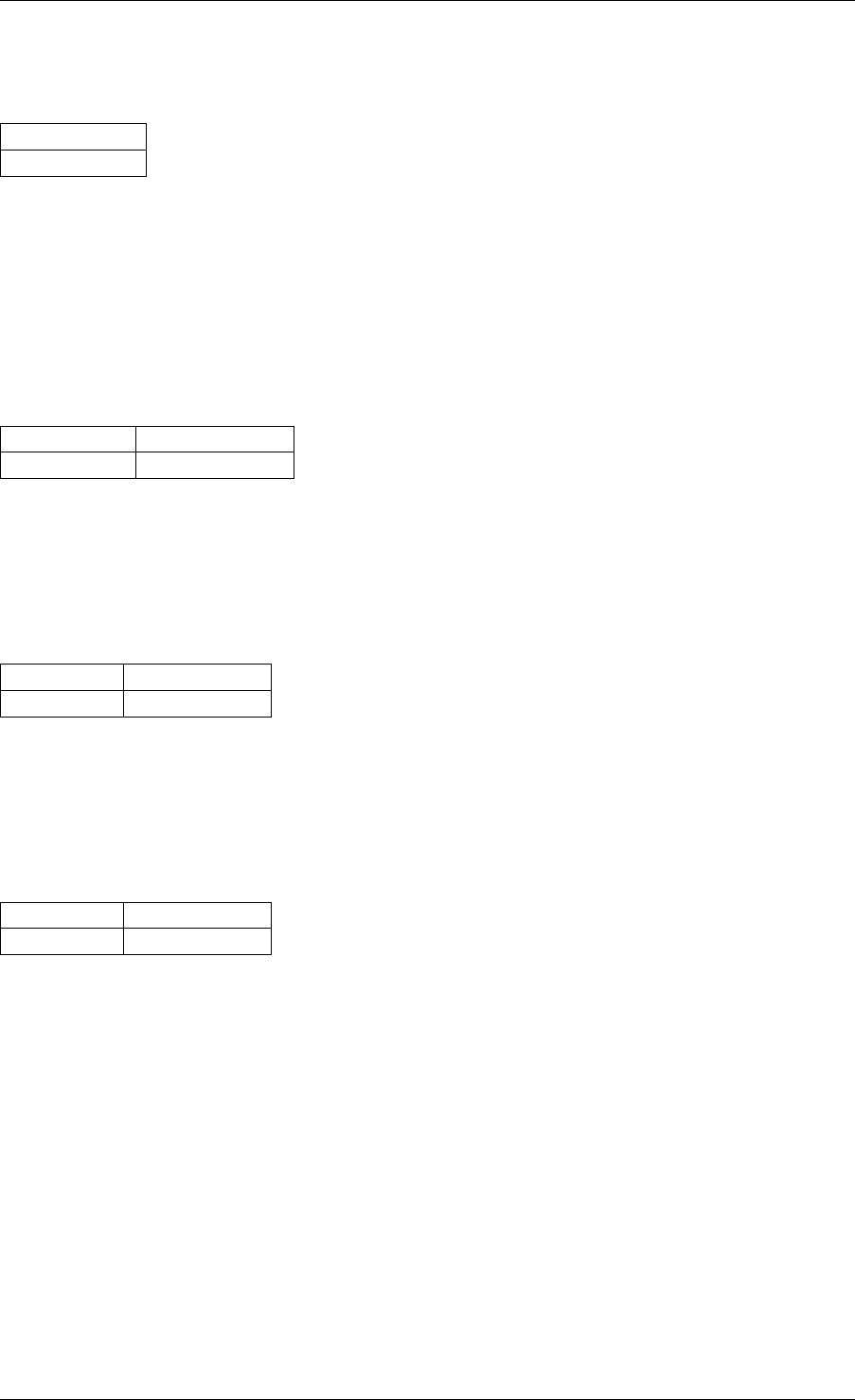

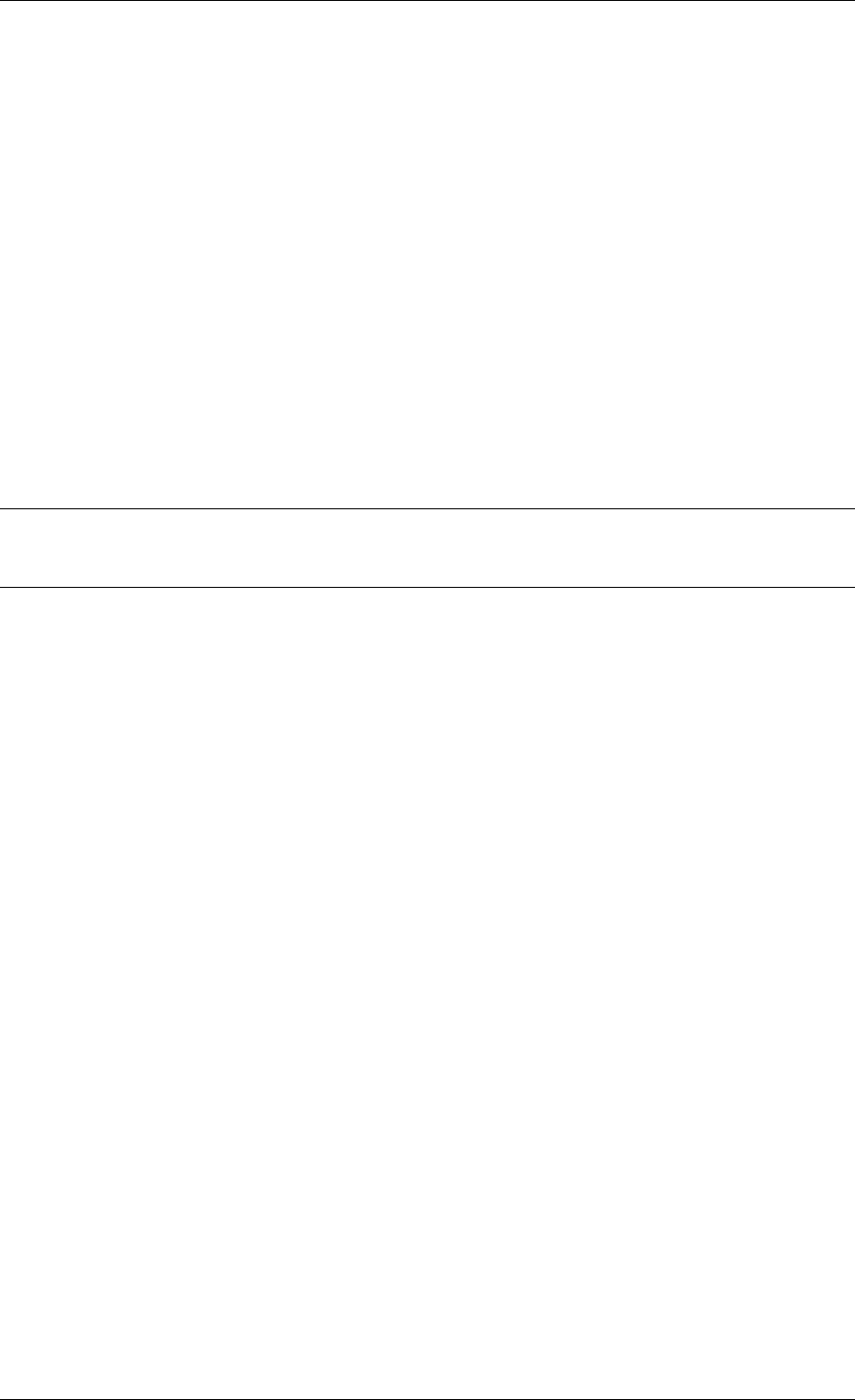

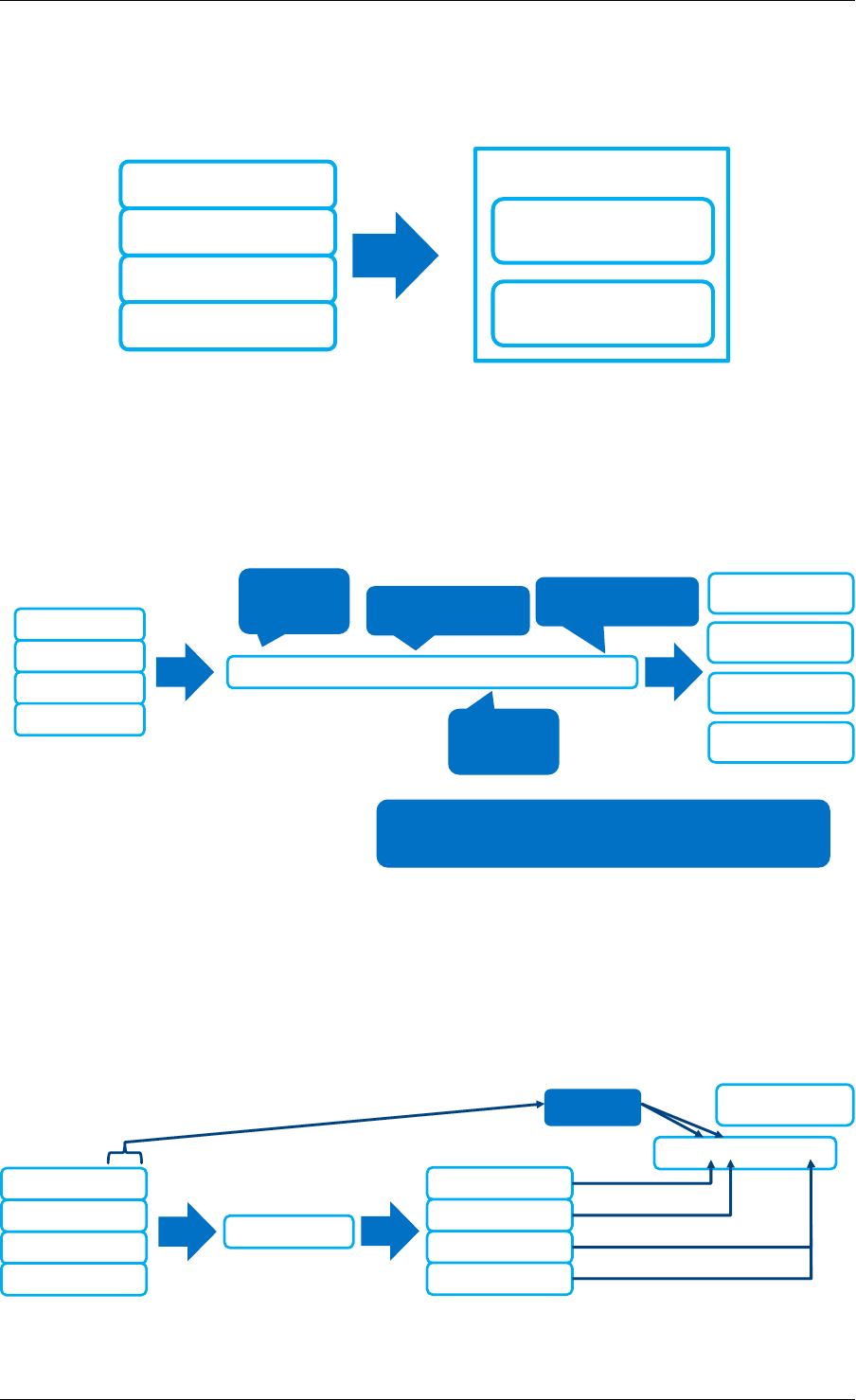

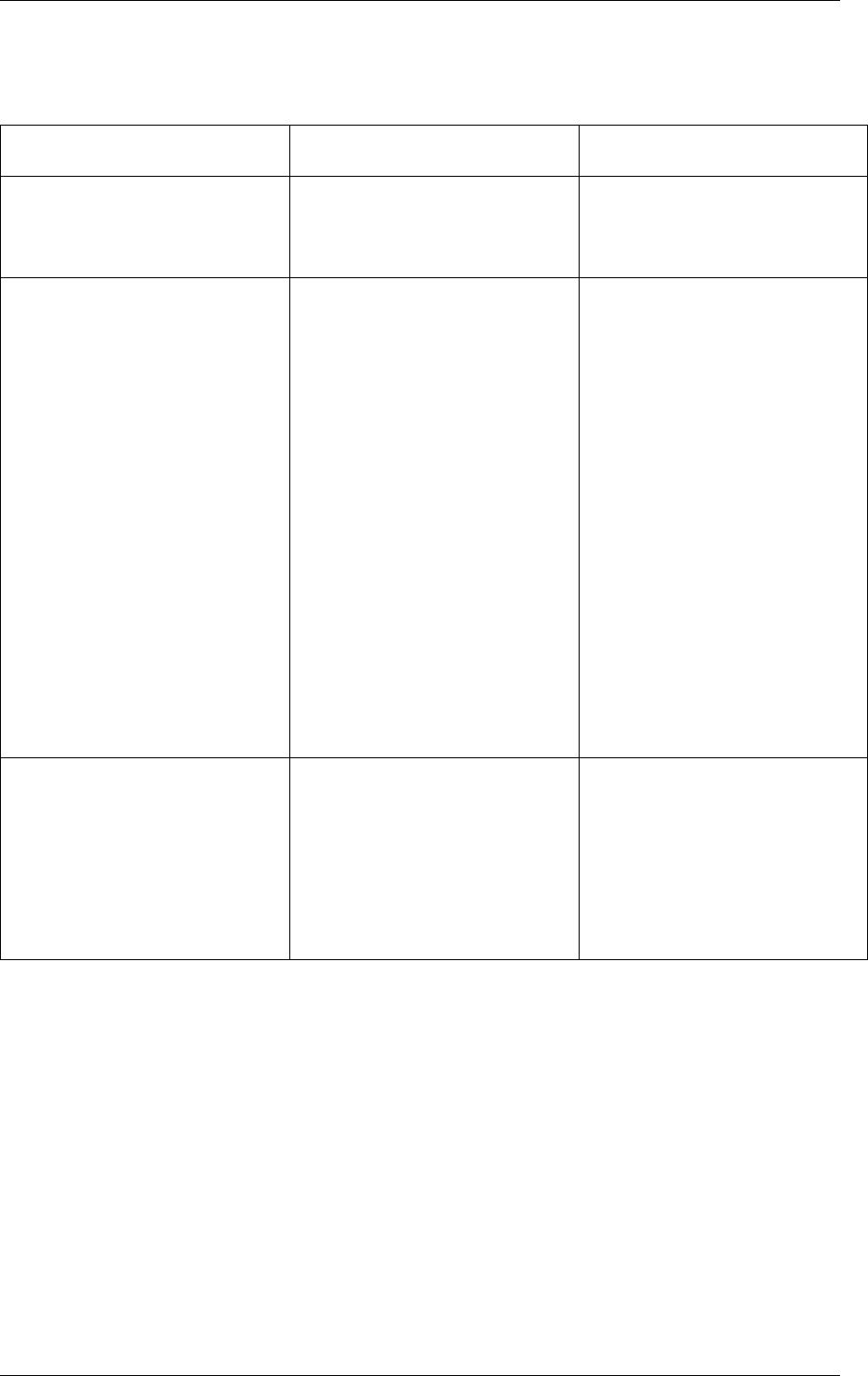

Master lcore lcore 1 lcore 2

main()

rte_eal_init()

rte_eal_memory_init()

rte_eal_logs_init()

rte_eal_pci_init()

...

pthread_create(1)

pthread_create(2)

per-thread init

wait per-thread init

wait

wait all threads

per_lcore_

app_init()

per_lcore_

app_init()

rte_eal_mp_wait_lcore()

application

...

wait wait

application

...

application

...

rte_eal_remote_lauch(app)

rte_eal_remote_lauch(

per_lcore_app_init)

other inits (libs, drivers)

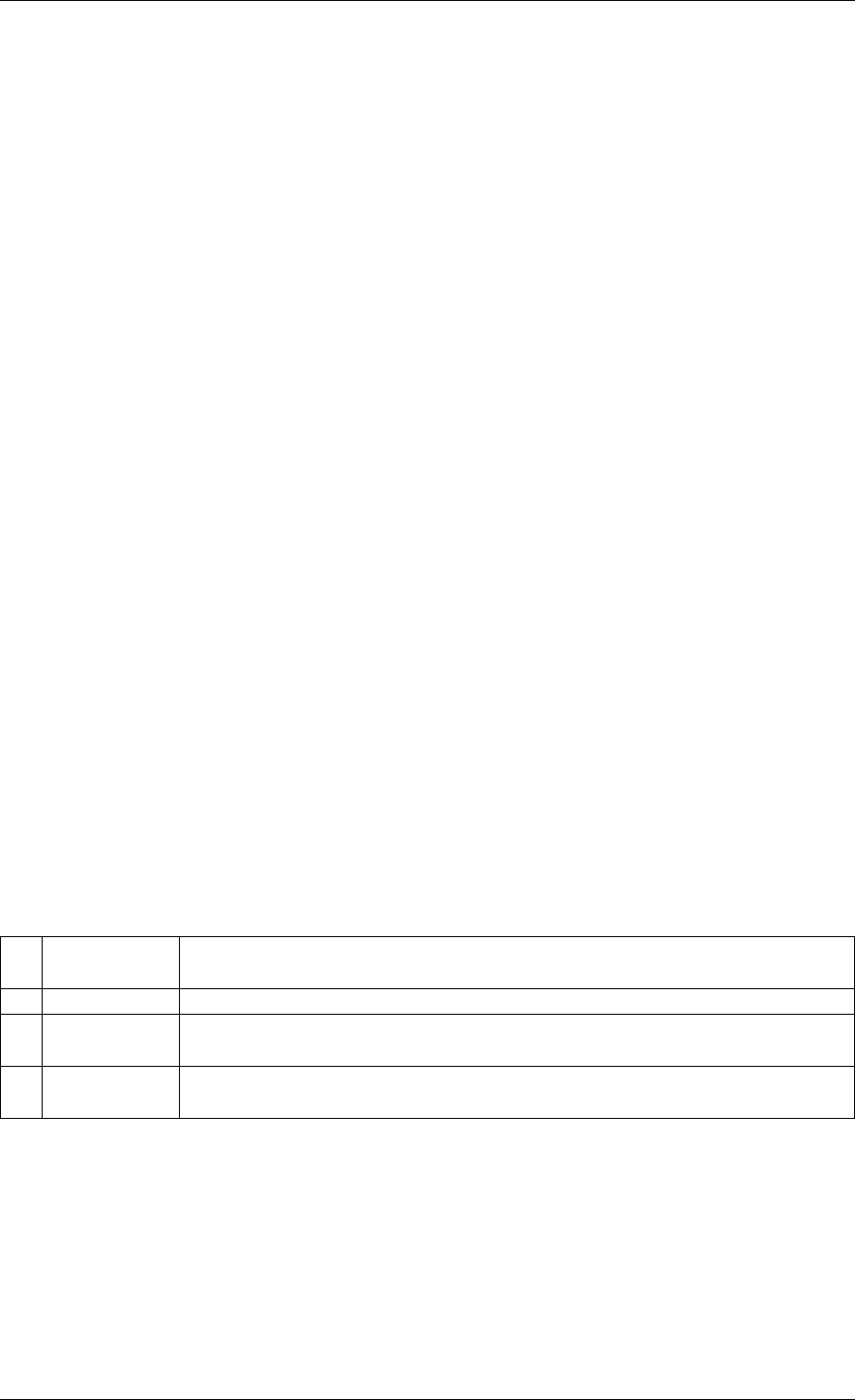

Fig. 3.1: EAL Initialization in a Linux Application Environment

3.1. EAL in a Linux-userland Execution Environment 9

Programmer’s Guide, Release 18.11.0-rc5

For chunks of memory which must be IOVA-contiguous, it is recommended to use

rte_memzone_reserve() function with RTE_MEMZONE_IOVA_CONTIG flag specified. This

way, memory allocator will ensure that, whatever memory mode is in use, either reserved

memory will satisfy the requirements, or the allocation will fail.

There is no need to preallocate any memory at startup using -m or --socket-mem command-

line parameters, however it is still possible to do so, in which case preallocate memory will be

“pinned” (i.e. will never be released by the application back to the system). It will be possible

to allocate more hugepages, and deallocate those, but any preallocated pages will not be

freed. If neither -m nor --socket-mem were specified, no memory will be preallocated, and

all memory will be allocated at runtime, as needed.

Another available option to use in dynamic memory mode is --single-file-segments

command-line option. This option will put pages in single files (per memseg list), as opposed

to creating a file per page. This is normally not needed, but can be useful for use cases like

userspace vhost, where there is limited number of page file descriptors that can be passed to

VirtIO.

If the application (or DPDK-internal code, such as device drivers) wishes to receive notifica-

tions about newly allocated memory, it is possible to register for memory event callbacks via

rte_mem_event_callback_register() function. This will call a callback function any

time DPDK’s memory map has changed.

If the application (or DPDK-internal code, such as device drivers) wishes to

be notified about memory allocations above specified threshold (and have a

chance to deny them), allocation validator callbacks are also available via

rte_mem_alloc_validator_callback_register() function.

A default validator callback is provided by EAL, which can be enabled with a --socket-limit

command-line option, for a simple way to limit maximum amount of memory that can be used

by DPDK application.

Note: In multiprocess scenario, all related processes (i.e. primary process, and secondary

processes running with the same prefix) must be in the same memory modes. That is, if

primary process is run in dynamic memory mode, all of its secondary processes must be run

in the same mode. The same is applicable to --single-file-segments command-line

option - both primary and secondary processes must shared this mode.

• Legacy memory mode

This mode is enabled by specifying --legacy-mem command-line switch to the EAL. This

switch will have no effect on FreeBSD as FreeBSD only supports legacy mode anyway.

This mode mimics historical behavior of EAL. That is, EAL will reserve all memory at startup,

sort all memory into large IOVA-contiguous chunks, and will not allow acquiring or releasing

hugepages from the system at runtime.

If neither -m nor --socket-mem were specified, the entire available hugepage memory will

be preallocated.

• 32-bit support

Additional restrictions are present when running in 32-bit mode. In dynamic memory mode, by

default maximum of 2 gigabytes of VA space will be preallocated, and all of it will be on master

lcore NUMA node unless --socket-mem flag is used.

3.1. EAL in a Linux-userland Execution Environment 10

Programmer’s Guide, Release 18.11.0-rc5

In legacy mode, VA space will only be preallocated for segments that were requested (plus

padding, to keep IOVA-contiguousness).

• Maximum amount of memory

All possible virtual memory space that can ever be used for hugepage mapping in a DPDK

process is preallocated at startup, thereby placing an upper limit on how much memory a

DPDK application can have. DPDK memory is stored in segment lists, each segment is strictly

one physical page. It is possible to change the amount of virtual memory being preallocated at

startup by editing the following config variables:

•CONFIG_RTE_MAX_MEMSEG_LISTS controls how many segment lists can DPDK have

•CONFIG_RTE_MAX_MEM_MB_PER_LIST controls how much megabytes of memory each

segment list can address

•CONFIG_RTE_MAX_MEMSEG_PER_LIST controls how many segments each segment

can have

•CONFIG_RTE_MAX_MEMSEG_PER_TYPE controls how many segments each memory

type can have (where “type” is defined as “page size + NUMA node” combination)

•CONFIG_RTE_MAX_MEM_MB_PER_TYPE controls how much megabytes of memory each

memory type can address

•CONFIG_RTE_MAX_MEM_MB places a global maximum on the amount of memory DPDK

can reserve

Normally, these options do not need to be changed.

Note: Preallocated virtual memory is not to be confused with preallocated hugepage memory!

All DPDK processes preallocate virtual memory at startup. Hugepages can later be mapped

into that preallocated VA space (if dynamic memory mode is enabled), and can optionally be

mapped into it at startup.

3.1.5 Support for Externally Allocated Memory

It is possible to use externally allocated memory in DPDK, using a set of malloc heap API’s.

Support for externally allocated memory is implemented through overloading the socket ID -

externally allocated heaps will have socket ID’s that would be considered invalid under normal

circumstances. Requesting an allocation to take place from a specified externally allocated

memory is a matter of supplying the correct socket ID to DPDK allocator, either directly (e.g.

through a call to rte_malloc) or indirectly (through data structure-specific allocation API’s

such as rte_ring_create).

Since there is no way DPDK can verify whether memory are is available or valid, this re-

sponsibility falls on the shoulders of the user. All multiprocess synchronization is also user’s

responsibility, as well as ensuring that all calls to add/attach/detach/remove memory are done

in the correct order. It is not required to attach to a memory area in all processes - only attach

to memory areas as needed.

The expected workflow is as follows:

• Get a pointer to memory area

• Create a named heap

3.1. EAL in a Linux-userland Execution Environment 11

Programmer’s Guide, Release 18.11.0-rc5

•Add memory area(s) to the heap

–If IOVA table is not specified, IOVA addresses will be assumed to be unavailable,

and DMA mappings will not be performed

–Other processes must attach to the memory area before they can use it

• Get socket ID used for the heap

• Use normal DPDK allocation procedures, using supplied socket ID

•If memory area is no longer needed, it can be removed from the heap

–Other processes must detach from this memory area before it can be removed

•If heap is no longer needed, remove it

–Socket ID will become invalid and will not be reused

For more information, please refer to rte_malloc API documentation, specifically the

rte_malloc_heap_*family of function calls.

3.1.6 Per-lcore and Shared Variables

Note: lcore refers to a logical execution unit of the processor, sometimes called a hardware

thread.

Shared variables are the default behavior. Per-lcore variables are implemented using Thread

Local Storage (TLS) to provide per-thread local storage.

3.1.7 Logs

A logging API is provided by EAL. By default, in a Linux application, logs are sent to syslog and

also to the console. However, the log function can be overridden by the user to use a different

logging mechanism.

Trace and Debug Functions

There are some debug functions to dump the stack in glibc. The rte_panic() function can

voluntarily provoke a SIG_ABORT, which can trigger the generation of a core file, readable by

gdb.

3.1.8 CPU Feature Identification

The EAL can query the CPU at runtime (using the rte_cpu_get_features() function) to deter-

mine which CPU features are available.

3.1.9 User Space Interrupt Event

• User Space Interrupt and Alarm Handling in Host Thread

3.1. EAL in a Linux-userland Execution Environment 12

Programmer’s Guide, Release 18.11.0-rc5

The EAL creates a host thread to poll the UIO device file descriptors to detect the interrupts.

Callbacks can be registered or unregistered by the EAL functions for a specific interrupt event

and are called in the host thread asynchronously. The EAL also allows timed callbacks to be

used in the same way as for NIC interrupts.

Note: In DPDK PMD, the only interrupts handled by the dedicated host thread are those for

link status change (link up and link down notification) and for sudden device removal.

• RX Interrupt Event

The receive and transmit routines provided by each PMD don’t limit themselves to execute in

polling thread mode. To ease the idle polling with tiny throughput, it’s useful to pause the polling

and wait until the wake-up event happens. The RX interrupt is the first choice to be such kind

of wake-up event, but probably won’t be the only one.

EAL provides the event APIs for this event-driven thread mode. Taking linuxapp as an example,

the implementation relies on epoll. Each thread can monitor an epoll instance in which all the

wake-up events’ file descriptors are added. The event file descriptors are created and mapped

to the interrupt vectors according to the UIO/VFIO spec. From bsdapp’s perspective, kqueue

is the alternative way, but not implemented yet.

EAL initializes the mapping between event file descriptors and interrupt vectors, while each

device initializes the mapping between interrupt vectors and queues. In this way, EAL actually

is unaware of the interrupt cause on the specific vector. The eth_dev driver takes responsibility

to program the latter mapping.

Note: Per queue RX interrupt event is only allowed in VFIO which supports multiple MSI-

X vector. In UIO, the RX interrupt together with other interrupt causes shares the same

vector. In this case, when RX interrupt and LSC(link status change) interrupt are both en-

abled(intr_conf.lsc == 1 && intr_conf.rxq == 1), only the former is capable.

The RX interrupt are controlled/enabled/disabled by ethdev APIs - ‘rte_eth_dev_rx_intr_*’.

They return failure if the PMD hasn’t support them yet. The intr_conf.rxq flag is used to turn on

the capability of RX interrupt per device.

• Device Removal Event

This event is triggered by a device being removed at a bus level. Its underlying resources may

have been made unavailable (i.e. PCI mappings unmapped). The PMD must make sure that

on such occurrence, the application can still safely use its callbacks.

This event can be subscribed to in the same way one would subscribe to a link status change

event. The execution context is thus the same, i.e. it is the dedicated interrupt host thread.

Considering this, it is likely that an application would want to close a device having emitted

a Device Removal Event. In such case, calling rte_eth_dev_close() can trigger it to

unregister its own Device Removal Event callback. Care must be taken not to close the device

from the interrupt handler context. It is necessary to reschedule such closing operation.

3.1.10 Blacklisting

The EAL PCI device blacklist functionality can be used to mark certain NIC ports as blacklisted,

so they are ignored by the DPDK. The ports to be blacklisted are identified using the PCIe*

description (Domain:Bus:Device.Function).

3.1. EAL in a Linux-userland Execution Environment 13

Programmer’s Guide, Release 18.11.0-rc5

3.1.11 Misc Functions

Locks and atomic operations are per-architecture (i686 and x86_64).

3.1.12 IOVA Mode Configuration

Auto detection of the IOVA mode, based on probing the bus and IOMMU configuration, may

not report the desired addressing mode when virtual devices that are not directly attached to

the bus are present. To facilitate forcing the IOVA mode to a specific value the EAL command

line option --iova-mode can be used to select either physical addressing(‘pa’) or virtual ad-

dressing(‘va’).

3.2 Memory Segments and Memory Zones (memzone)

The mapping of physical memory is provided by this feature in the EAL. As physical memory

can have gaps, the memory is described in a table of descriptors, and each descriptor (called

rte_memseg ) describes a physical page.

On top of this, the memzone allocator’s role is to reserve contiguous portions of physical mem-

ory. These zones are identified by a unique name when the memory is reserved.

The rte_memzone descriptors are also located in the configuration structure. This structure is

accessed using rte_eal_get_configuration(). The lookup (by name) of a memory zone returns

a descriptor containing the physical address of the memory zone.

Memory zones can be reserved with specific start address alignment by supplying the align

parameter (by default, they are aligned to cache line size). The alignment value should be a

power of two and not less than the cache line size (64 bytes). Memory zones can also be

reserved from either 2 MB or 1 GB hugepages, provided that both are available on the system.

Both memsegs and memzones are stored using rte_fbarray structures. Please refer to

DPDK API Reference for more information.

3.3 Multiple pthread

DPDK usually pins one pthread per core to avoid the overhead of task switching. This allows

for significant performance gains, but lacks flexibility and is not always efficient.

Power management helps to improve the CPU efficiency by limiting the CPU runtime frequency.

However, alternately it is possible to utilize the idle cycles available to take advantage of the

full capability of the CPU.

By taking advantage of cgroup, the CPU utilization quota can be simply assigned. This gives

another way to improve the CPU efficiency, however, there is a prerequisite; DPDK must handle

the context switching between multiple pthreads per core.

For further flexibility, it is useful to set pthread affinity not only to a CPU but to a CPU set.

3.2. Memory Segments and Memory Zones (memzone) 14

Programmer’s Guide, Release 18.11.0-rc5

3.3.1 EAL pthread and lcore Affinity

The term “lcore” refers to an EAL thread, which is really a Linux/FreeBSD pthread. “EAL

pthreads” are created and managed by EAL and execute the tasks issued by remote_launch.

In each EAL pthread, there is a TLS (Thread Local Storage) called _lcore_id for unique identi-

fication. As EAL pthreads usually bind 1:1 to the physical CPU, the _lcore_id is typically equal

to the CPU ID.

When using multiple pthreads, however, the binding is no longer always 1:1 between an EAL

pthread and a specified physical CPU. The EAL pthread may have affinity to a CPU set, and

as such the _lcore_id will not be the same as the CPU ID. For this reason, there is an EAL

long option ‘–lcores’ defined to assign the CPU affinity of lcores. For a specified lcore ID or ID

group, the option allows setting the CPU set for that EAL pthread.

The format pattern: –lcores=’<lcore_set>[@cpu_set][,<lcore_set>[@cpu_set],...]’

‘lcore_set’ and ‘cpu_set’ can be a single number, range or a group.

A number is a “digit([0-9]+)”; a range is “<number>-<number>”; a group is “(<num-

ber|range>[,<number|range>,...])”.

If a ‘@cpu_set’ value is not supplied, the value of ‘cpu_set’ will default to the value of ‘lcore_set’.

For example, "--lcores='1,2@(5-7),(3-5)@(0,2),(0,6),7-8'" which means start 9 EAL thread;

lcore 0 runs on cpuset 0x41 (cpu 0,6);

lcore 1 runs on cpuset 0x2 (cpu 1);

lcore 2 runs on cpuset 0xe0 (cpu 5,6,7);

lcore 3,4,5 runs on cpuset 0x5 (cpu 0,2);

lcore 6 runs on cpuset 0x41 (cpu 0,6);

lcore 7 runs on cpuset 0x80 (cpu 7);

lcore 8 runs on cpuset 0x100 (cpu 8).

Using this option, for each given lcore ID, the associated CPUs can be assigned. It’s also

compatible with the pattern of corelist(‘-l’) option.

3.3.2 non-EAL pthread support

It is possible to use the DPDK execution context with any user pthread (aka. Non-EAL

pthreads). In a non-EAL pthread, the _lcore_id is always LCORE_ID_ANY which identifies

that it is not an EAL thread with a valid, unique, _lcore_id. Some libraries will use an alter-

native unique ID (e.g. TID), some will not be impacted at all, and some will work but with

limitations (e.g. timer and mempool libraries).

All these impacts are mentioned in Known Issues section.

3.3.3 Public Thread API

There are two public APIs rte_thread_set_affinity() and

rte_thread_get_affinity() introduced for threads. When they’re used in any pthread

context, the Thread Local Storage(TLS) will be set/get.

Those TLS include _cpuset and _socket_id:

•_cpuset stores the CPUs bitmap to which the pthread is affinitized.

•_socket_id stores the NUMA node of the CPU set. If the CPUs in CPU set belong to

different NUMA node, the _socket_id will be set to SOCKET_ID_ANY.

3.3. Multiple pthread 15

Programmer’s Guide, Release 18.11.0-rc5

3.3.4 Known Issues

• rte_mempool

The rte_mempool uses a per-lcore cache inside the mempool. For non-EAL pthreads,

rte_lcore_id() will not return a valid number. So for now, when rte_mempool is used

with non-EAL pthreads, the put/get operations will bypass the default mempool cache and

there is a performance penalty because of this bypass. Only user-owned external caches

can be used in a non-EAL context in conjunction with rte_mempool_generic_put()

and rte_mempool_generic_get() that accept an explicit cache parameter.

• rte_ring

rte_ring supports multi-producer enqueue and multi-consumer dequeue. However, it is

non-preemptive, this has a knock on effect of making rte_mempool non-preemptable.

Note: The “non-preemptive” constraint means:

–a pthread doing multi-producers enqueues on a given ring must not be preempted

by another pthread doing a multi-producer enqueue on the same ring.

–a pthread doing multi-consumers dequeues on a given ring must not be preempted

by another pthread doing a multi-consumer dequeue on the same ring.

Bypassing this constraint may cause the 2nd pthread to spin until the 1st one is scheduled

again. Moreover, if the 1st pthread is preempted by a context that has an higher priority,

it may even cause a dead lock.

This means, use cases involving preemptible pthreads should consider using rte_ring

carefully.

1. It CAN be used for preemptible single-producer and single-consumer use case.

2. It CAN be used for non-preemptible multi-producer and preemptible single-

consumer use case.

3. It CAN be used for preemptible single-producer and non-preemptible multi-

consumer use case.

4. It MAY be used by preemptible multi-producer and/or preemptible multi-consumer

pthreads whose scheduling policy are all SCHED_OTHER(cfs), SCHED_IDLE or

SCHED_BATCH. User SHOULD be aware of the performance penalty before using

it.

5. It MUST not be used by multi-producer/consumer pthreads, whose scheduling poli-

cies are SCHED_FIFO or SCHED_RR.

• rte_timer

Running rte_timer_manage() on a non-EAL pthread is not allowed. However, reset-

ting/stopping the timer from a non-EAL pthread is allowed.

• rte_log

In non-EAL pthreads, there is no per thread loglevel and logtype, global loglevels are

used.

• misc

3.3. Multiple pthread 16

Programmer’s Guide, Release 18.11.0-rc5

The debug statistics of rte_ring, rte_mempool and rte_timer are not supported in a non-

EAL pthread.

3.3.5 cgroup control

The following is a simple example of cgroup control usage, there are two pthreads(t0 and t1)

doing packet I/O on the same core ($CPU). We expect only 50% of CPU spend on packet IO.

mkdir /sys/fs/cgroup/cpu/pkt_io

mkdir /sys/fs/cgroup/cpuset/pkt_io

echo $cpu > /sys/fs/cgroup/cpuset/cpuset.cpus

echo $t0 > /sys/fs/cgroup/cpu/pkt_io/tasks

echo $t0 > /sys/fs/cgroup/cpuset/pkt_io/tasks

echo $t1 > /sys/fs/cgroup/cpu/pkt_io/tasks

echo $t1 > /sys/fs/cgroup/cpuset/pkt_io/tasks

cd /sys/fs/cgroup/cpu/pkt_io

echo 100000 > pkt_io/cpu.cfs_period_us

echo 50000 > pkt_io/cpu.cfs_quota_us

3.4 Malloc

The EAL provides a malloc API to allocate any-sized memory.

The objective of this API is to provide malloc-like functions to allow allocation from hugepage

memory and to facilitate application porting. The DPDK API Reference manual describes the

available functions.

Typically, these kinds of allocations should not be done in data plane processing because they

are slower than pool-based allocation and make use of locks within the allocation and free

paths. However, they can be used in configuration code.

Refer to the rte_malloc() function description in the DPDK API Reference manual for more

information.

3.4.1 Cookies

When CONFIG_RTE_MALLOC_DEBUG is enabled, the allocated memory contains overwrite

protection fields to help identify buffer overflows.

3.4.2 Alignment and NUMA Constraints

The rte_malloc() takes an align argument that can be used to request a memory area that is

aligned on a multiple of this value (which must be a power of two).

On systems with NUMA support, a call to the rte_malloc() function will return memory that has

been allocated on the NUMA socket of the core which made the call. A set of APIs is also

provided, to allow memory to be explicitly allocated on a NUMA socket directly, or by allocated

on the NUMA socket where another core is located, in the case where the memory is to be

used by a logical core other than on the one doing the memory allocation.

3.4. Malloc 17

Programmer’s Guide, Release 18.11.0-rc5

3.4.3 Use Cases

This API is meant to be used by an application that requires malloc-like functions at initialization

time.

For allocating/freeing data at runtime, in the fast-path of an application, the memory pool library

should be used instead.

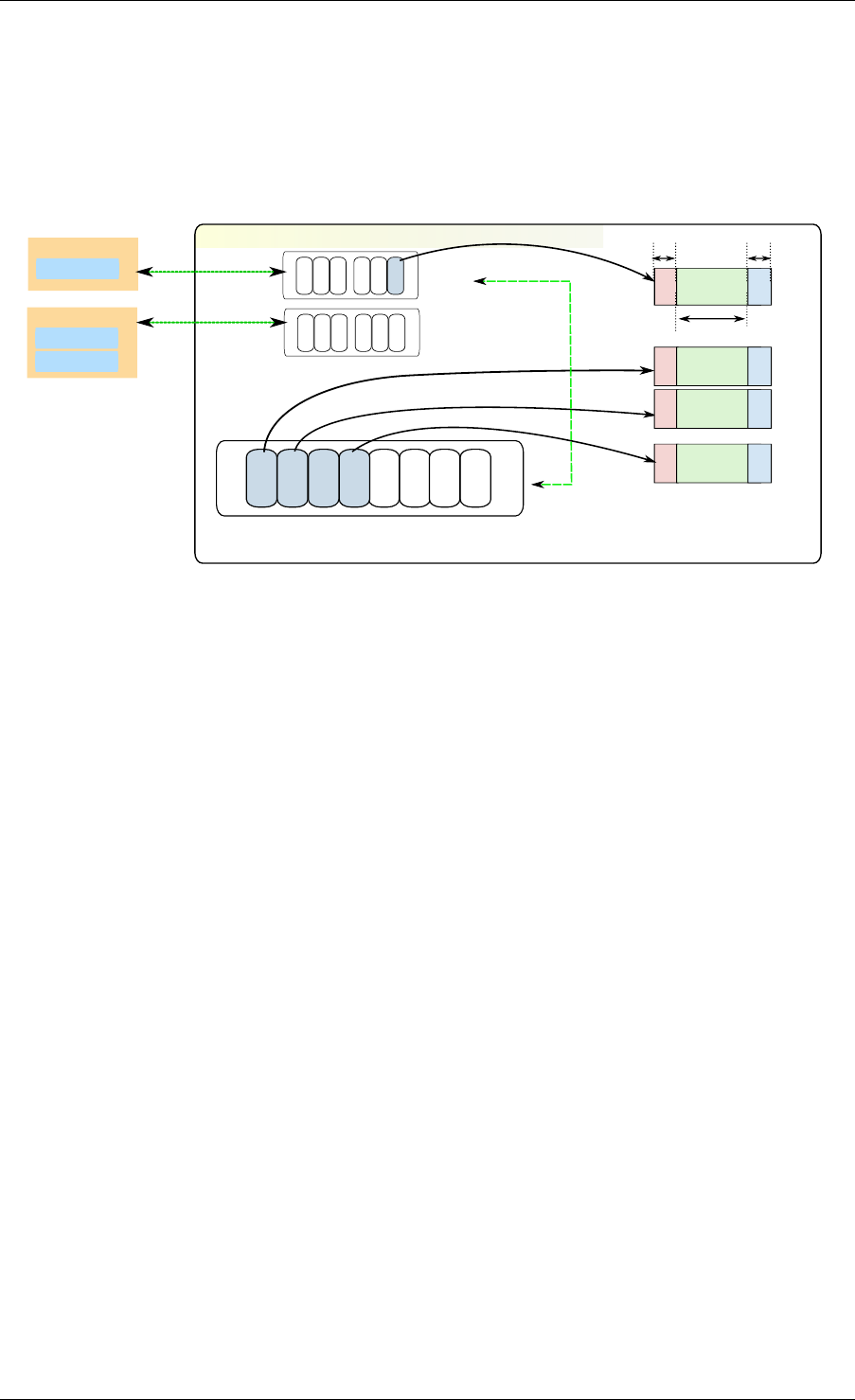

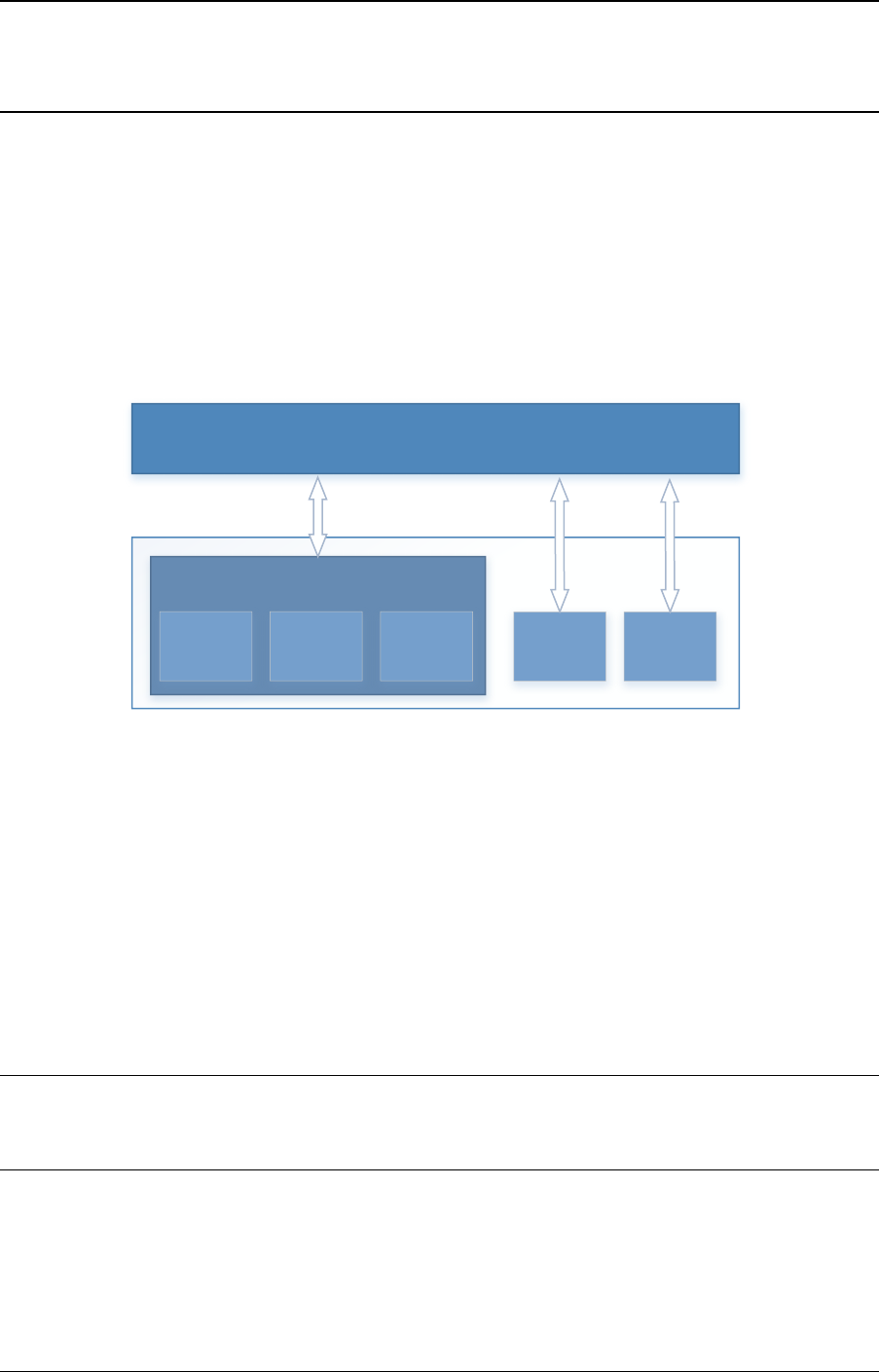

3.4.4 Internal Implementation

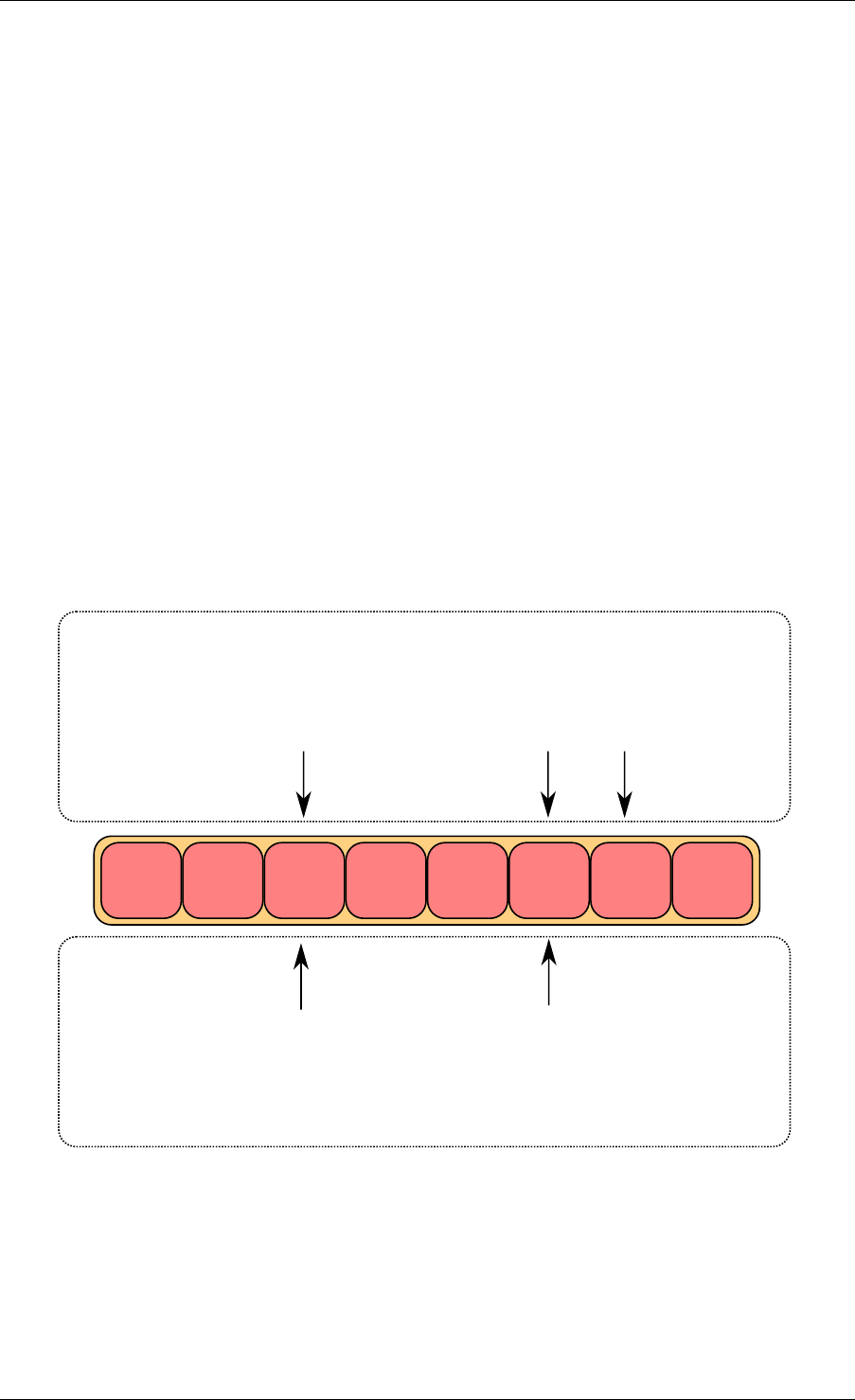

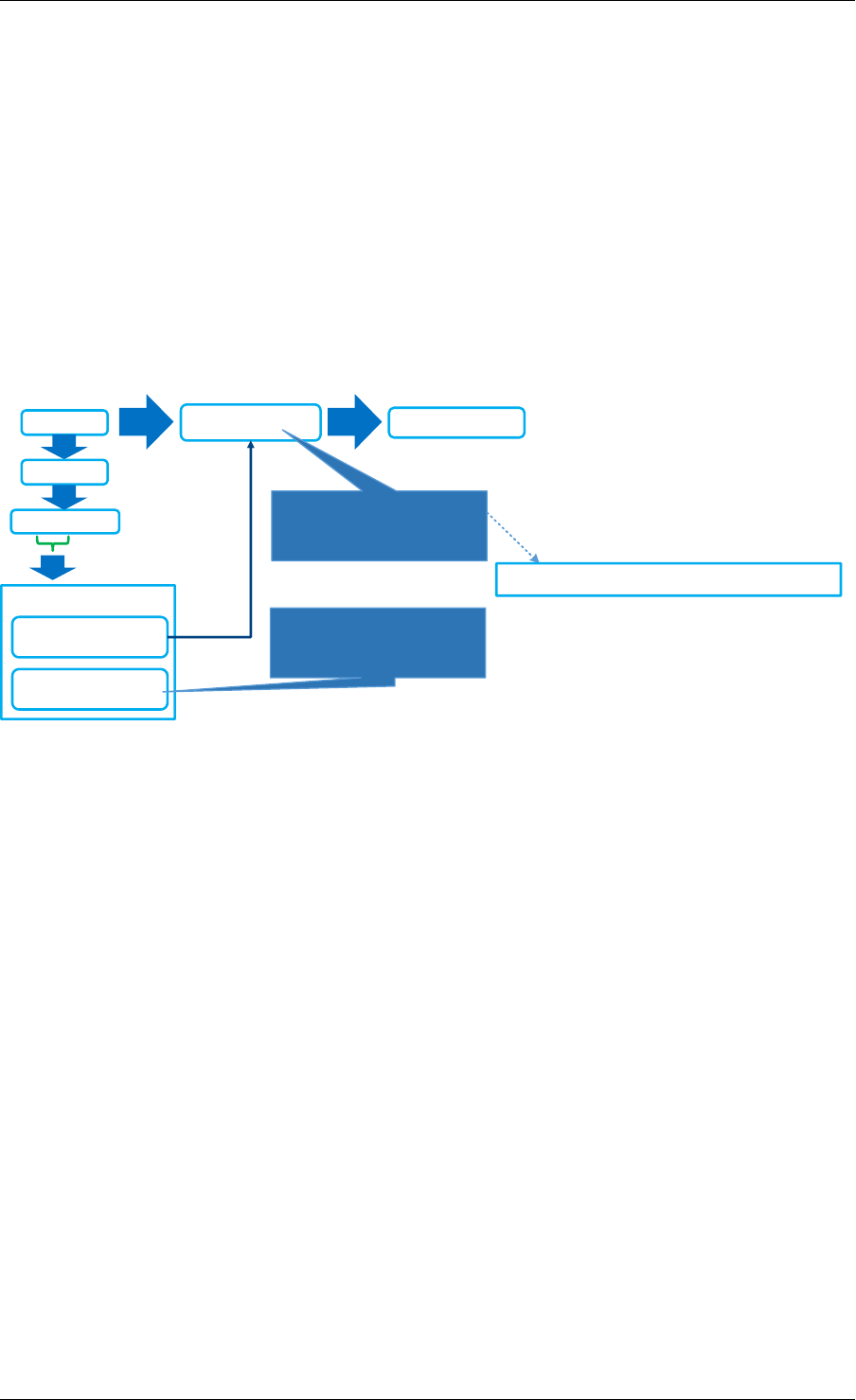

Data Structures

There are two data structure types used internally in the malloc library:

• struct malloc_heap - used to track free space on a per-socket basis

• struct malloc_elem - the basic element of allocation and free-space tracking inside the

library.

Structure: malloc_heap

The malloc_heap structure is used to manage free space on a per-socket basis. Internally,

there is one heap structure per NUMA node, which allows us to allocate memory to a thread

based on the NUMA node on which this thread runs. While this does not guarantee that the

memory will be used on that NUMA node, it is no worse than a scheme where the memory is

always allocated on a fixed or random node.

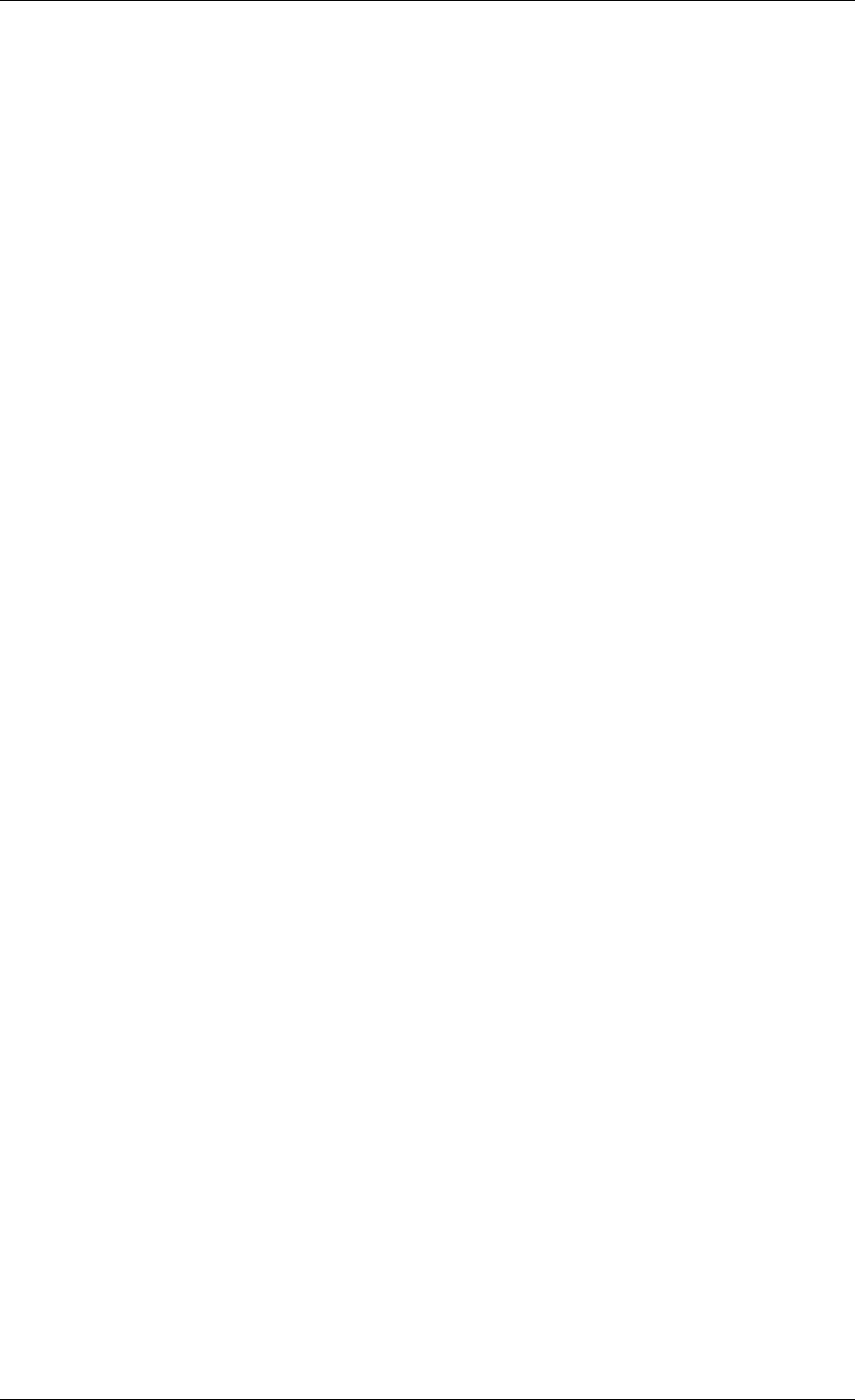

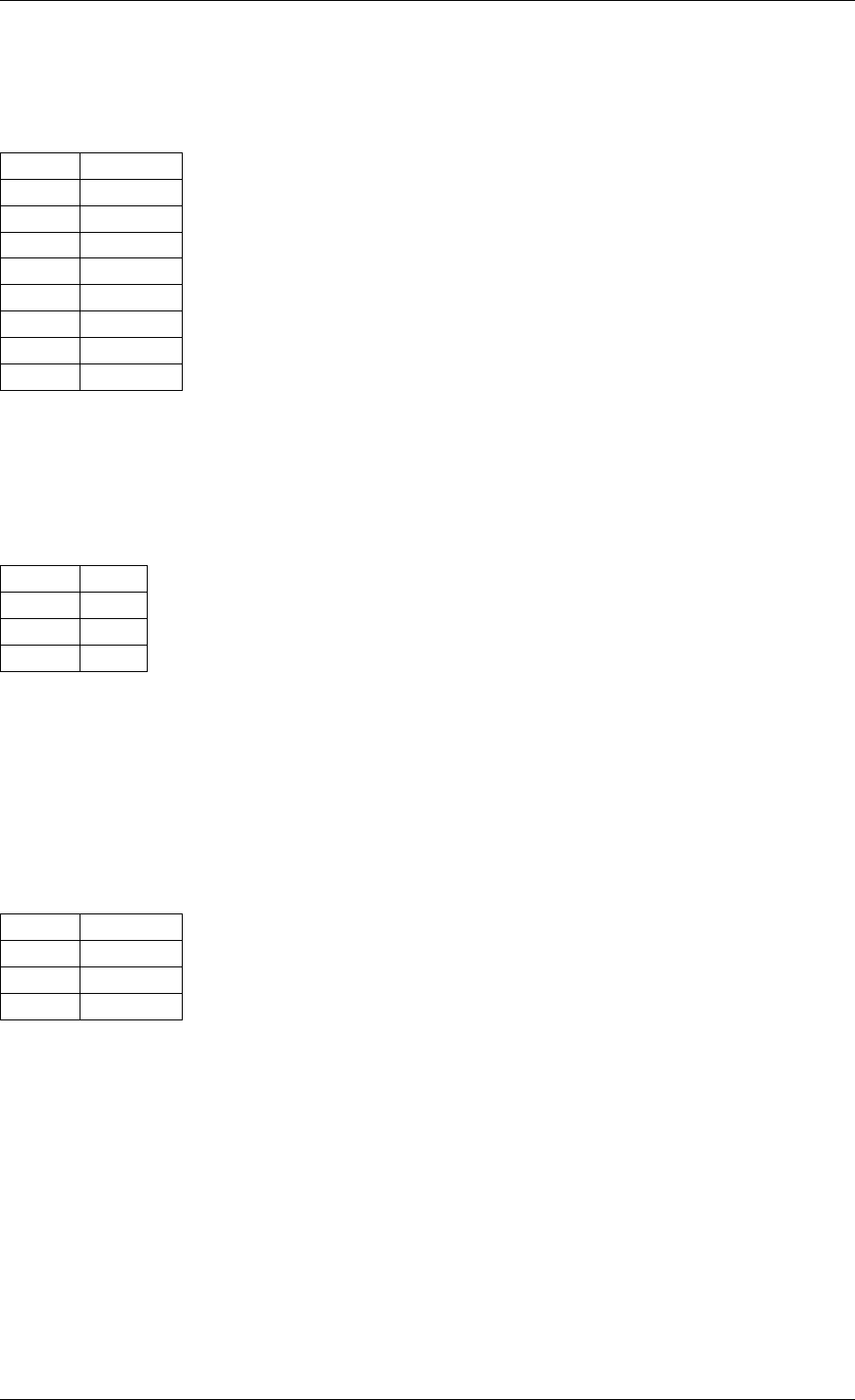

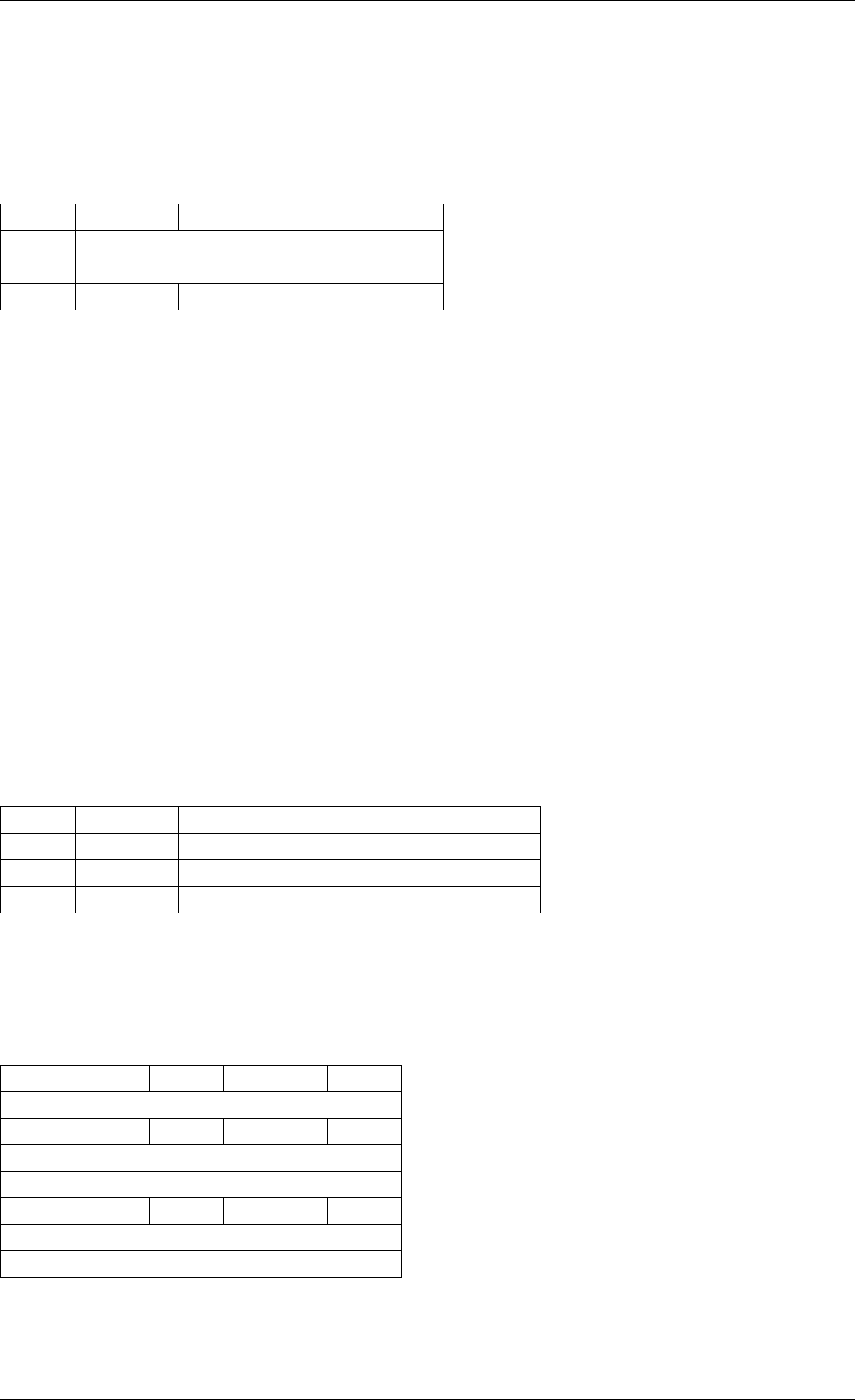

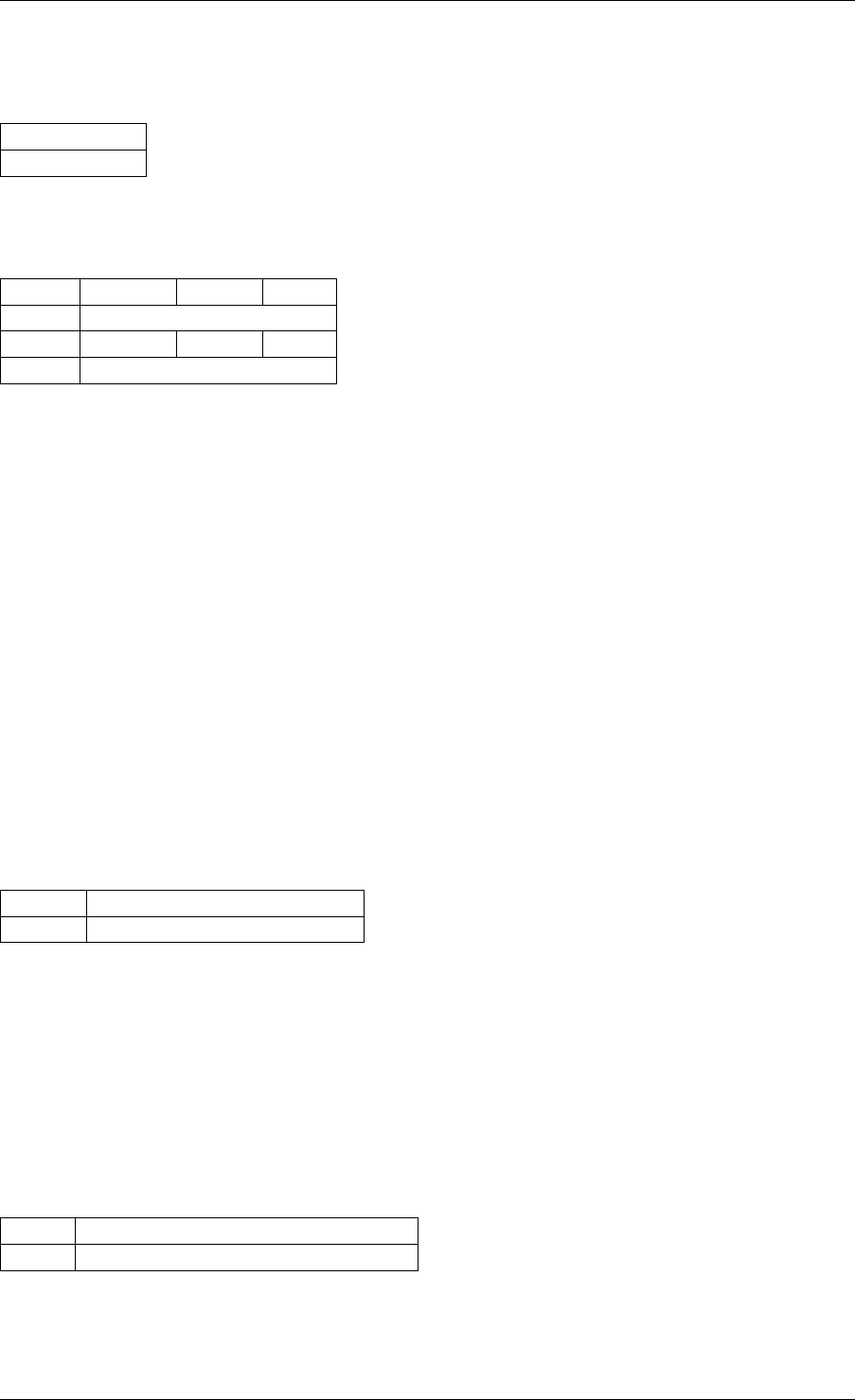

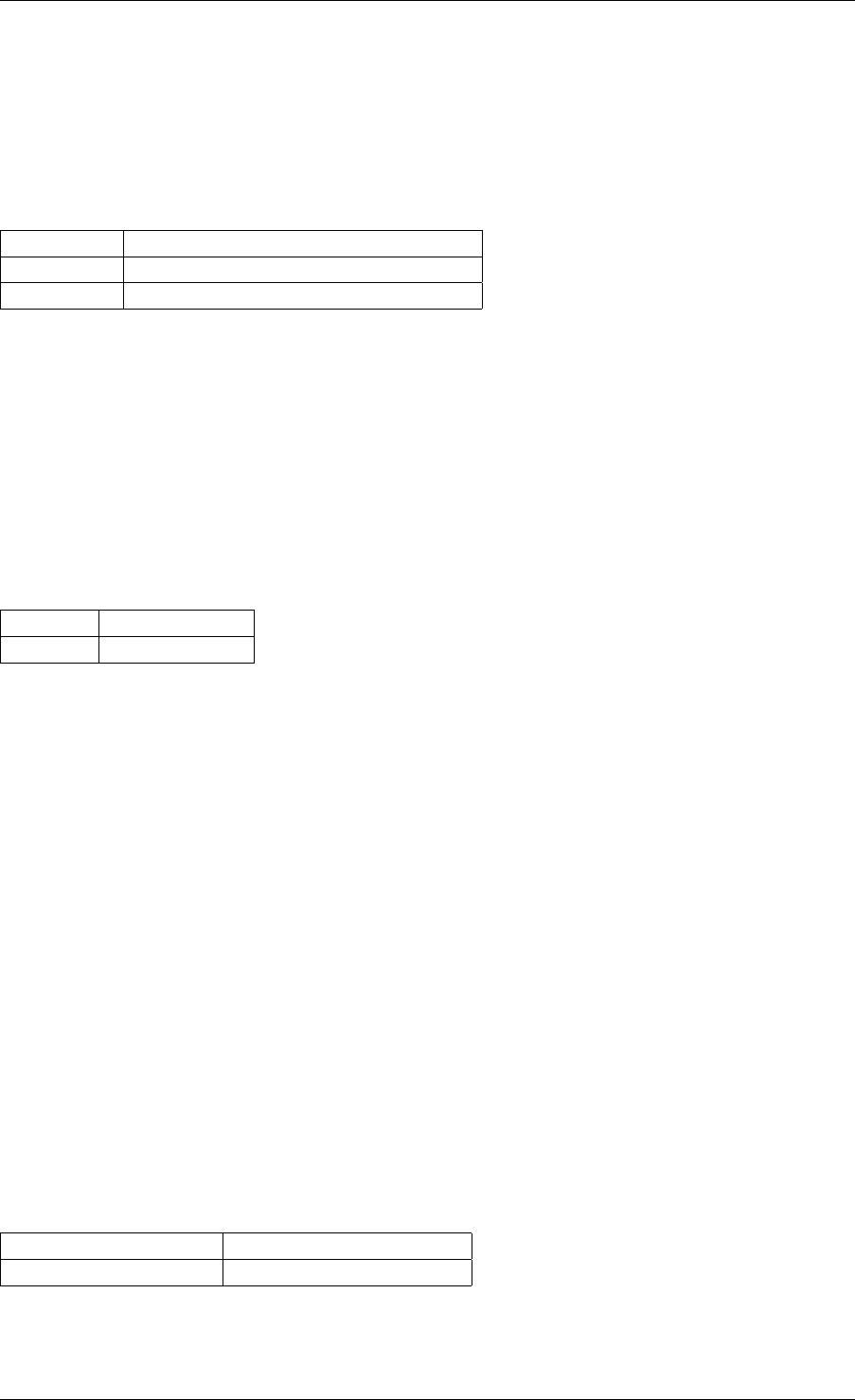

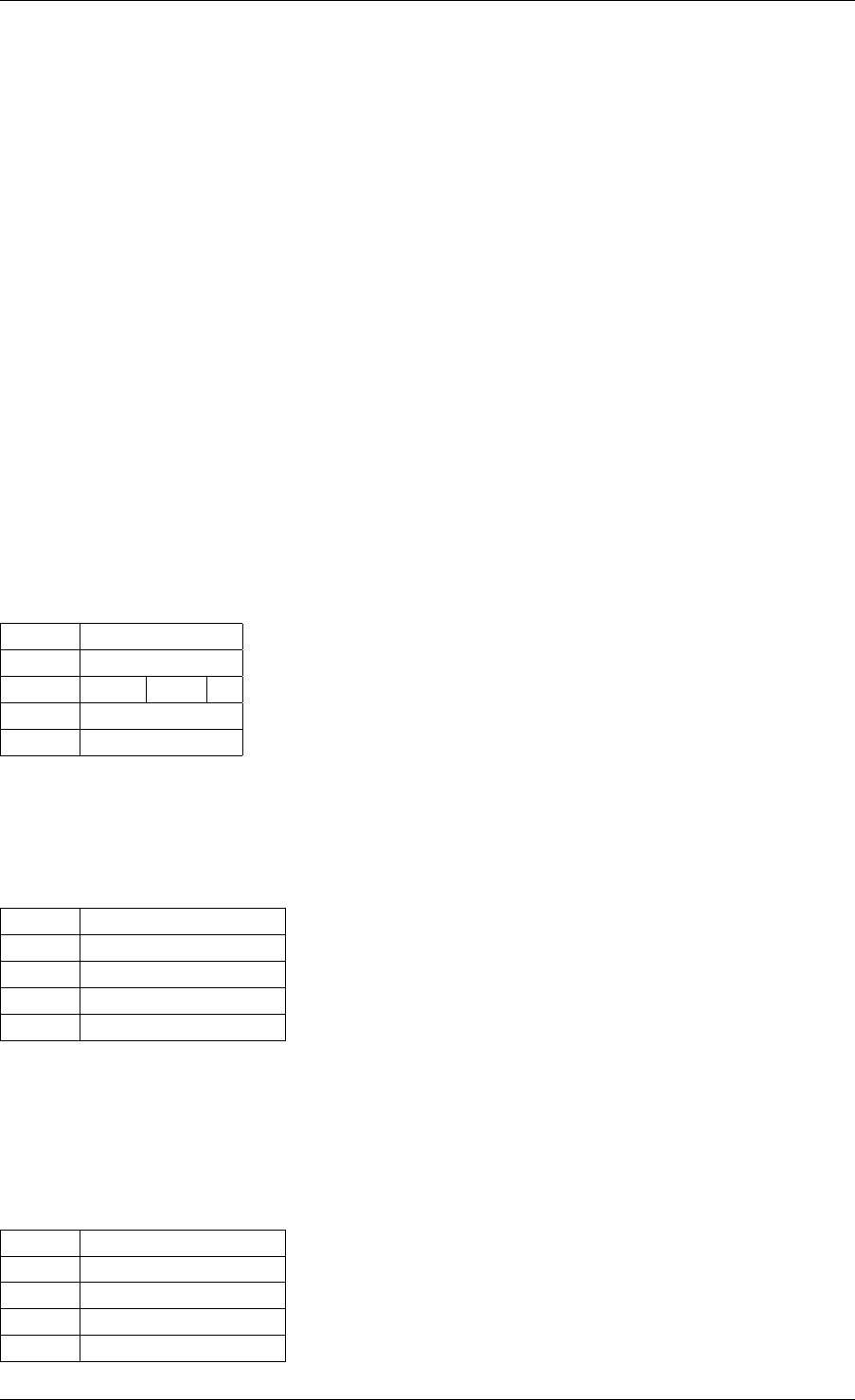

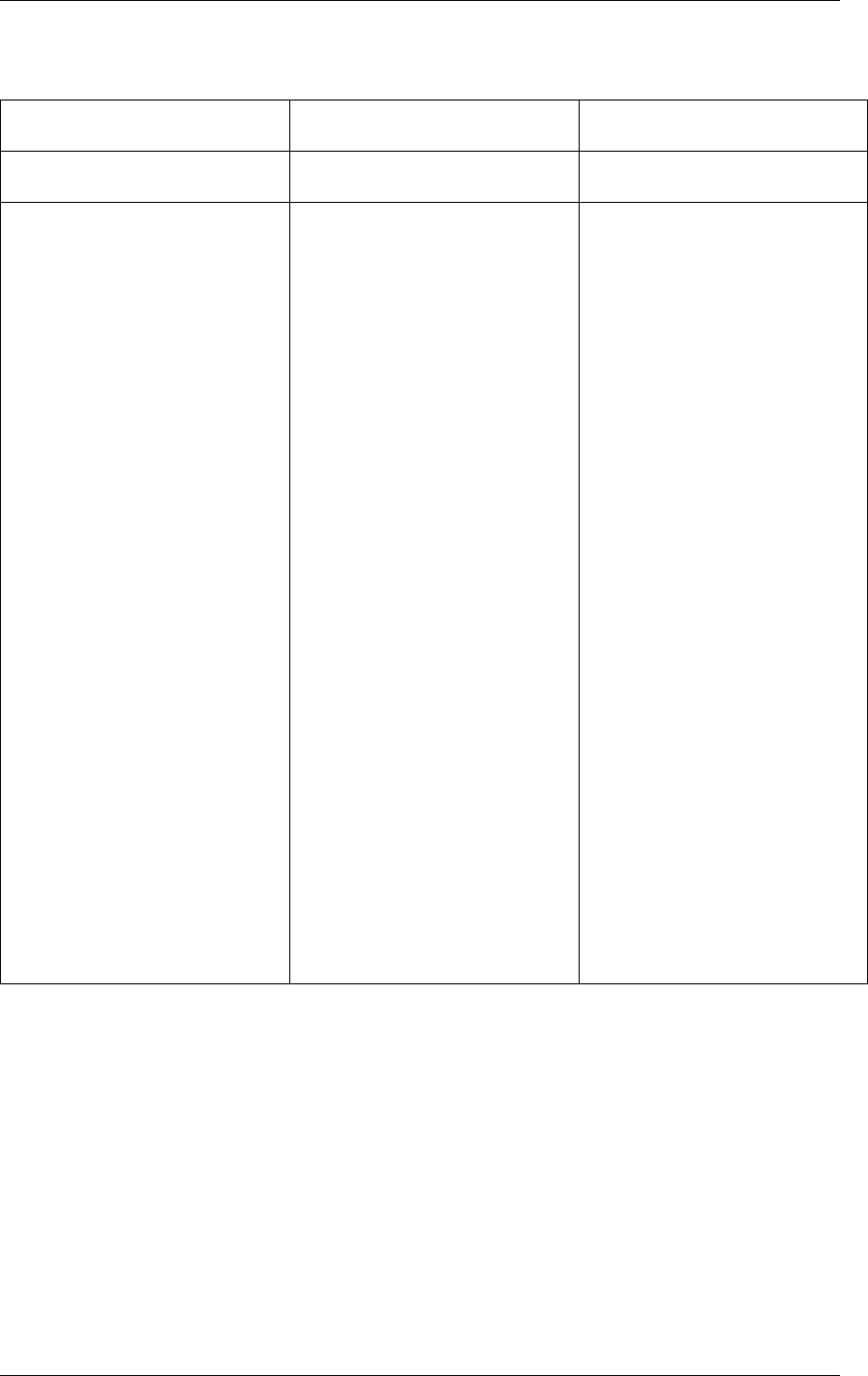

The key fields of the heap structure and their function are described below (see also diagram

above):

• lock - the lock field is needed to synchronize access to the heap. Given that the free

space in the heap is tracked using a linked list, we need a lock to prevent two threads

manipulating the list at the same time.

• free_head - this points to the first element in the list of free nodes for this malloc heap.

• first - this points to the first element in the heap.

• last - this points to the last element in the heap.

Structure: malloc_elem

The malloc_elem structure is used as a generic header structure for various blocks of memory.

It is used in two different ways - all shown in the diagram above:

1. As a header on a block of free or allocated memory - normal case

2. As a padding header inside a block of memory

The most important fields in the structure and how they are used are described below.

Malloc heap is a doubly-linked list, where each element keeps track of its previous and next

elements. Due to the fact that hugepage memory can come and go, neighbouring malloc

elements may not necessarily be adjacent in memory. Also, since a malloc element may

3.4. Malloc 18

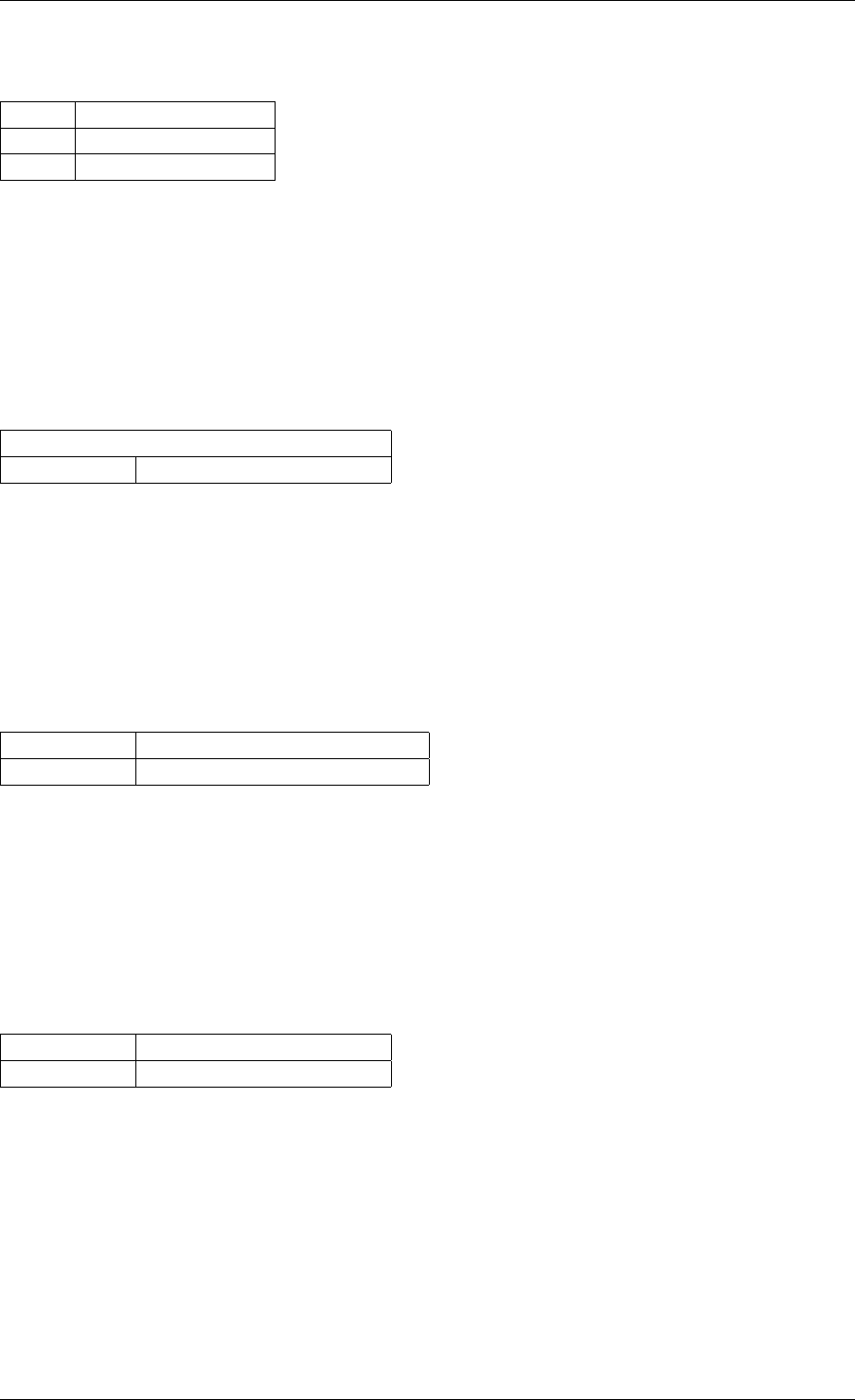

Programmer’s Guide, Release 18.11.0-rc5

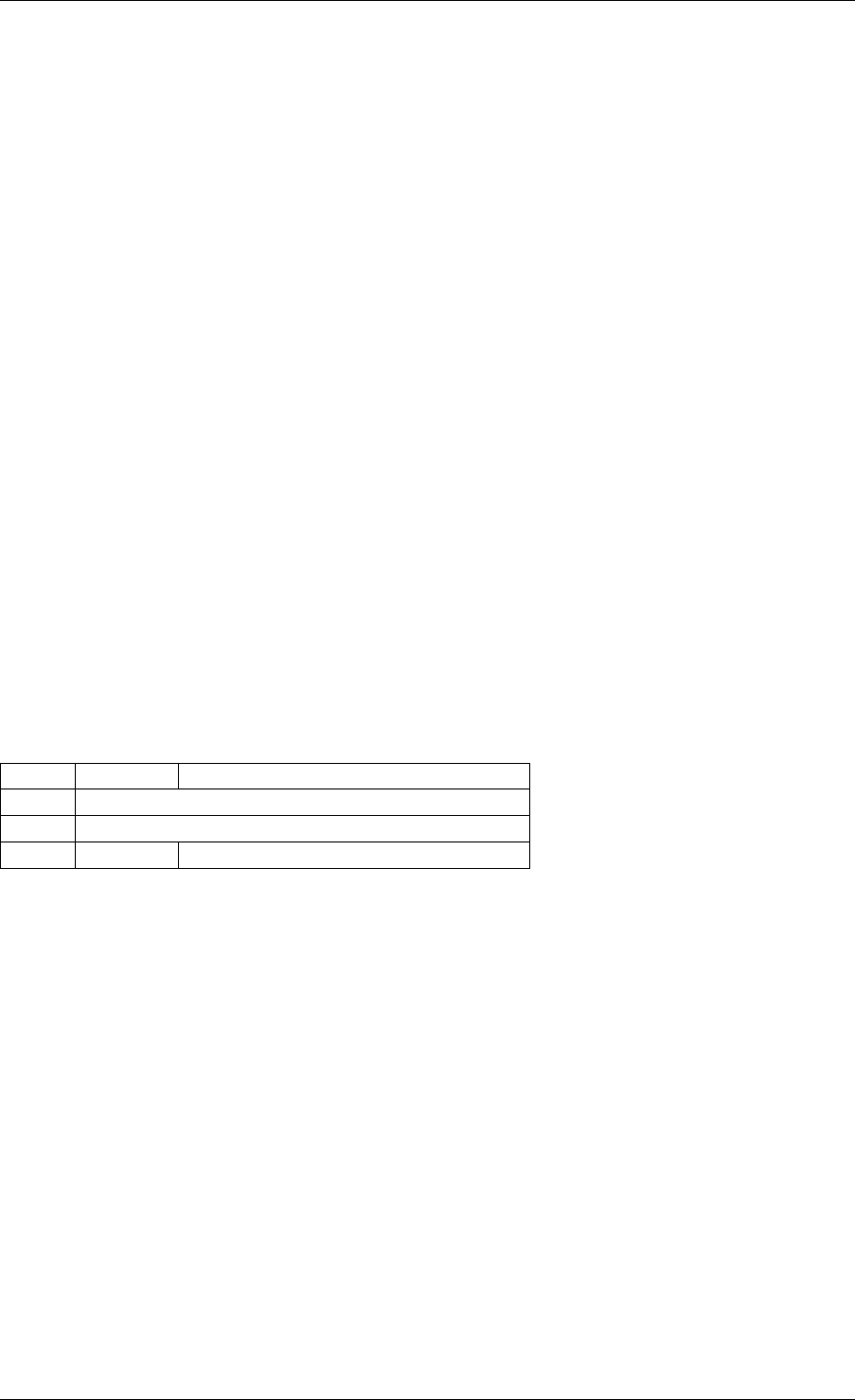

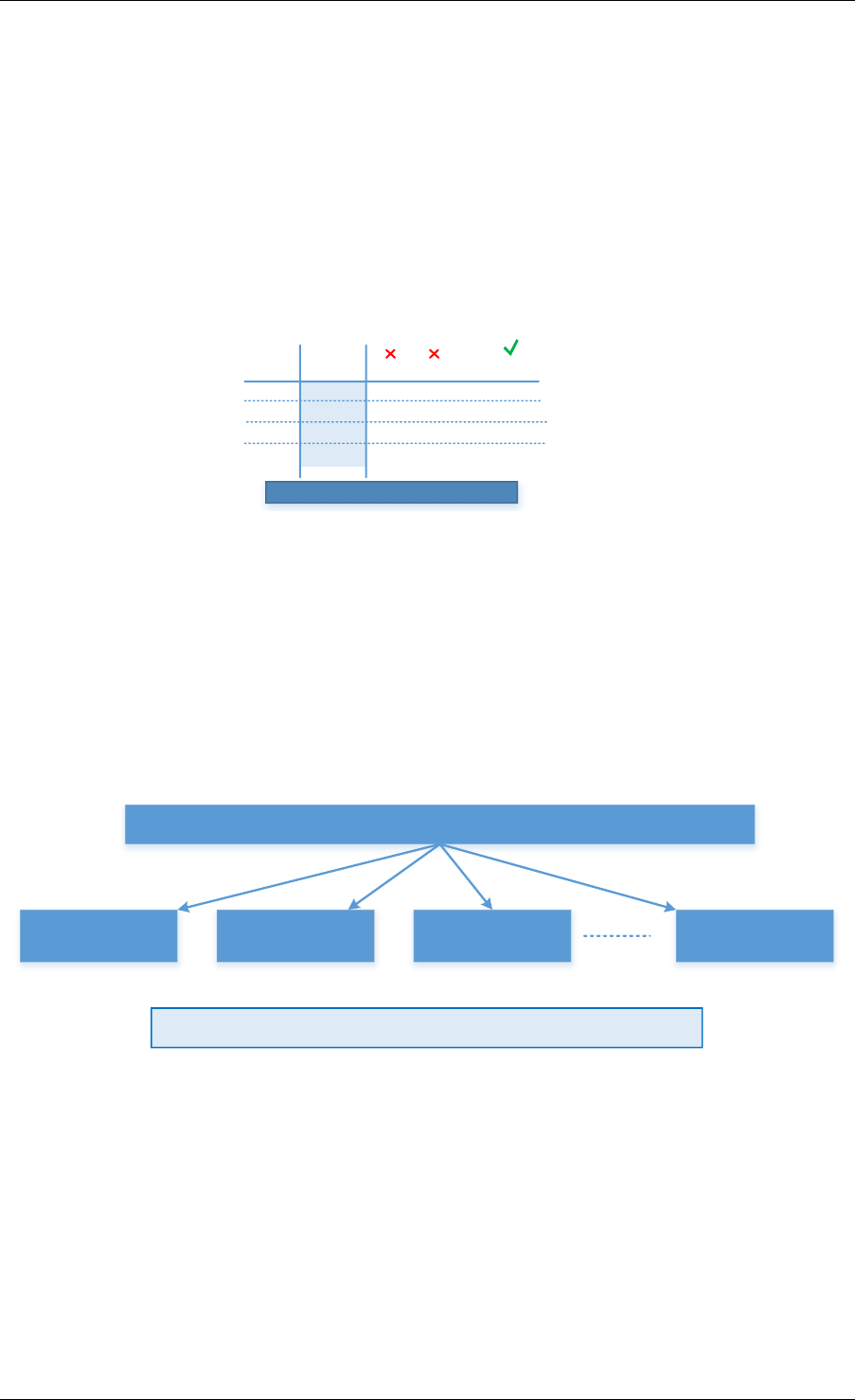

Free element header

Used element header

Free space

Allocated data

Pad element header Padding

Unavailable space

size

pad

prev/next prev/next

size

next free next free

prev/next prev/next

Fig. 3.2: Example of a malloc heap and malloc elements within the malloc library

span multiple pages, its contents may not necessarily be IOVA-contiguous either - each malloc

element is only guaranteed to be virtually contiguous.

Note: If the usage of a particular field in one of the above three usages is not described, the

field can be assumed to have an undefined value in that situation, for example, for padding

headers only the “state” and “pad” fields have valid values.

• heap - this pointer is a reference back to the heap structure from which this block was

allocated. It is used for normal memory blocks when they are being freed, to add the

newly-freed block to the heap’s free-list.

• prev - this pointer points to previous header element/block in memory. When freeing a

block, this pointer is used to reference the previous block to check if that block is also

free. If so, and the two blocks are immediately adjacent to each other, then the two free

blocks are merged to form a single larger block.

• next - this pointer points to next header element/block in memory. When freeing a block,

this pointer is used to reference the next block to check if that block is also free. If so,

and the two blocks are immediately adjacent to each other, then the two free blocks are

merged to form a single larger block.

• free_list - this is a structure pointing to previous and next elements in this heap’s free list.

It is only used in normal memory blocks; on malloc() to find a suitable free block to

allocate and on free() to add the newly freed element to the free-list.

• state - This field can have one of three values: FREE,BUSY or PAD. The former two are

to indicate the allocation state of a normal memory block and the latter is to indicate that

the element structure is a dummy structure at the end of the start-of-block padding, i.e.

where the start of the data within a block is not at the start of the block itself, due to

alignment constraints. In that case, the pad header is used to locate the actual malloc

element header for the block.

• pad - this holds the length of the padding present at the start of the block. In the case

of a normal block header, it is added to the address of the end of the header to give the

address of the start of the data area, i.e. the value passed back to the application on

a malloc. Within a dummy header inside the padding, this same value is stored, and is

subtracted from the address of the dummy header to yield the address of the actual block

3.4. Malloc 19

Programmer’s Guide, Release 18.11.0-rc5

header.

• size - the size of the data block, including the header itself.

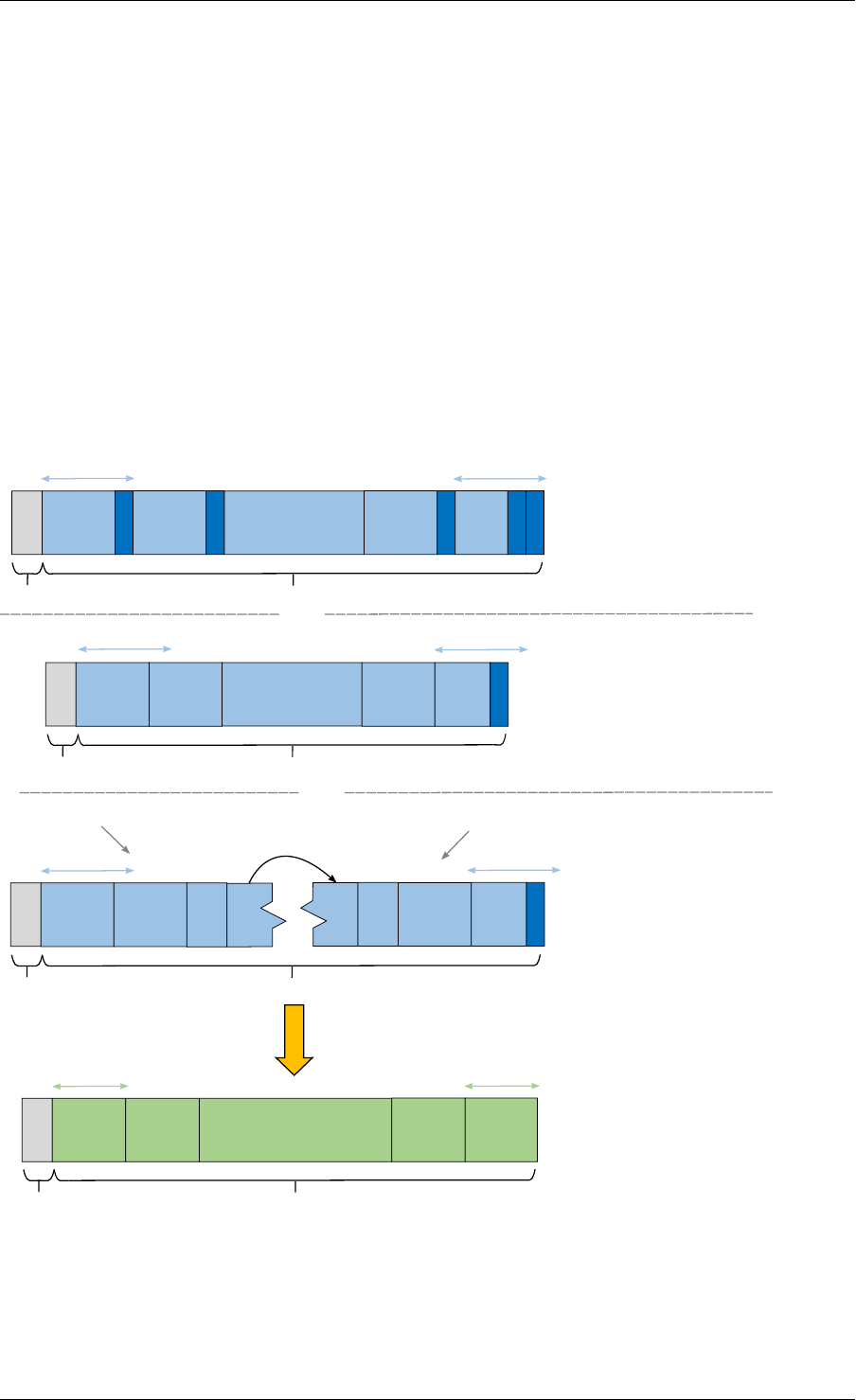

Memory Allocation

On EAL initialization, all preallocated memory segments are setup as part of the malloc heap.

This setup involves placing an element header with FREE at the start of each virtually contigu-

ous segment of memory. The FREE element is then added to the free_list for the malloc

heap.

This setup also happens whenever memory is allocated at runtime (if supported), in which case

newly allocated pages are also added to the heap, merging with any adjacent free segments if

there are any.

When an application makes a call to a malloc-like function, the malloc function will first index the

lcore_config structure for the calling thread, and determine the NUMA node of that thread.

The NUMA node is used to index the array of malloc_heap structures which is passed as a

parameter to the heap_alloc() function, along with the requested size, type, alignment and

boundary parameters.

The heap_alloc() function will scan the free_list of the heap, and attempt to find a free block

suitable for storing data of the requested size, with the requested alignment and boundary

constraints.

When a suitable free element has been identified, the pointer to be returned to the user is

calculated. The cache-line of memory immediately preceding this pointer is filled with a struct

malloc_elem header. Because of alignment and boundary constraints, there could be free

space at the start and/or end of the element, resulting in the following behavior:

1. Check for trailing space. If the trailing space is big enough, i.e. > 128 bytes, then the free

element is split. If it is not, then we just ignore it (wasted space).

2. Check for space at the start of the element. If the space at the start is small, i.e. <=128

bytes, then a pad header is used, and the remaining space is wasted. If, however, the

remaining space is greater, then the free element is split.

The advantage of allocating the memory from the end of the existing element is that no adjust-

ment of the free list needs to take place - the existing element on the free list just has its size

value adjusted, and the next/previous elements have their “prev”/”next” pointers redirected to

the newly created element.

In case when there is not enough memory in the heap to satisfy allocation request, EAL will

attempt to allocate more memory from the system (if supported) and, following successful

allocation, will retry reserving the memory again. In a multiprocessing scenario, all primary

and secondary processes will synchronize their memory maps to ensure that any valid pointer

to DPDK memory is guaranteed to be valid at all times in all currently running processes.

Failure to synchronize memory maps in one of the processes will cause allocation to fail, even

though some of the processes may have allocated the memory successfully. The memory is

not added to the malloc heap unless primary process has ensured that all other processes

have mapped this memory successfully.

Any successful allocation event will trigger a callback, for which user applications and other

DPDK subsystems can register. Additionally, validation callbacks will be triggered before allo-

3.4. Malloc 20

Programmer’s Guide, Release 18.11.0-rc5

cation if the newly allocated memory will exceed threshold set by the user, giving a chance to

allow or deny allocation.

Note: Any allocation of new pages has to go through primary process. If the primary process

is not active, no memory will be allocated even if it was theoretically possible to do so. This is

because primary’s process map acts as an authority on what should or should not be mapped,

while each secondary process has its own, local memory map. Secondary processes do not

update the shared memory map, they only copy its contents to their local memory map.

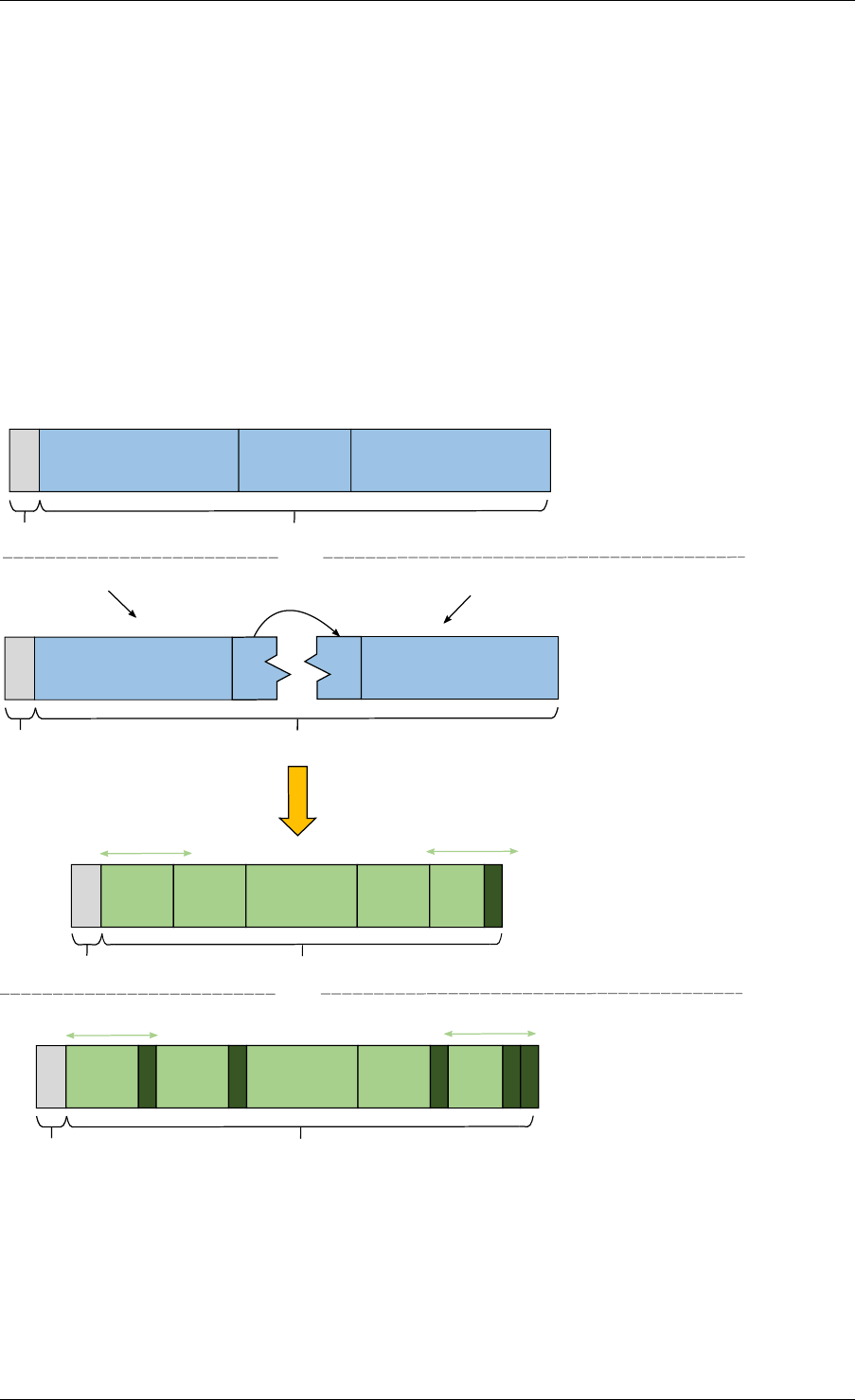

Freeing Memory

To free an area of memory, the pointer to the start of the data area is passed to the free

function. The size of the malloc_elem structure is subtracted from this pointer to get the

element header for the block. If this header is of type PAD then the pad length is further

subtracted from the pointer to get the proper element header for the entire block.

From this element header, we get pointers to the heap from which the block was allocated and

to where it must be freed, as well as the pointer to the previous and next elements. These

next and previous elements are then checked to see if they are also FREE and are immediately

adjacent to the current one, and if so, they are merged with the current element. This means

that we can never have two FREE memory blocks adjacent to one another, as they are always

merged into a single block.

If deallocating pages at runtime is supported, and the free element encloses one or more

pages, those pages can be deallocated and be removed from the heap. If DPDK was started

with command-line parameters for preallocating memory (-m or --socket-mem), then those

pages that were allocated at startup will not be deallocated.

Any successful deallocation event will trigger a callback, for which user applications and other

DPDK subsystems can register.

3.4. Malloc 21

CHAPTER

FOUR

SERVICE CORES

DPDK has a concept known as service cores, which enables a dynamic way of performing

work on DPDK lcores. Service core support is built into the EAL, and an API is provided to

optionally allow applications to control how the service cores are used at runtime.

The service cores concept is built up out of services (components of DPDK that require CPU

cycles to operate) and service cores (DPDK lcores, tasked with running services). The power

of the service core concept is that the mapping between service cores and services can be

configured to abstract away the difference between platforms and environments.

For example, the Eventdev has hardware and software PMDs. Of these the software PMD

requires an lcore to perform the scheduling operations, while the hardware PMD does not.

With service cores, the application would not directly notice that the scheduling is done in

software.

For detailed information about the service core API, please refer to the docs.

4.1 Service Core Initialization

There are two methods to having service cores in a DPDK application, either by using the

service coremask, or by dynamically adding cores using the API. The simpler of the two is to

pass the -s coremask argument to EAL, which will take any cores available in the main DPDK

coremask, and if the bits are also set in the service coremask the cores become service-cores

instead of DPDK application lcores.

4.2 Enabling Services on Cores

Each registered service can be individually mapped to a service core, or set of service cores.

Enabling a service on a particular core means that the lcore in question will run the service.

Disabling that core on the service stops the lcore in question from running the service.

Using this method, it is possible to assign specific workloads to each service core, and map N

workloads to M number of service cores. Each service lcore loops over the services that are

enabled for that core, and invokes the function to run the service.

22

Programmer’s Guide, Release 18.11.0-rc5

4.3 Service Core Statistics

The service core library is capable of collecting runtime statistics like number of calls to a

specific service, and number of cycles used by the service. The cycle count collection is

dynamically configurable, allowing any application to profile the services running on the system

at any time.

4.3. Service Core Statistics 23

CHAPTER

FIVE

RING LIBRARY

The ring allows the management of queues. Instead of having a linked list of infinite size, the

rte_ring has the following properties:

• FIFO

• Maximum size is fixed, the pointers are stored in a table

• Lockless implementation

• Multi-consumer or single-consumer dequeue

• Multi-producer or single-producer enqueue

• Bulk dequeue - Dequeues the specified count of objects if successful; otherwise fails

• Bulk enqueue - Enqueues the specified count of objects if successful; otherwise fails

• Burst dequeue - Dequeue the maximum available objects if the specified count cannot

be fulfilled

• Burst enqueue - Enqueue the maximum available objects if the specified count cannot

be fulfilled

The advantages of this data structure over a linked list queue are as follows:

• Faster; only requires a single Compare-And-Swap instruction of sizeof(void *) instead of

several double-Compare-And-Swap instructions.

• Simpler than a full lockless queue.

• Adapted to bulk enqueue/dequeue operations. As pointers are stored in a table, a de-

queue of several objects will not produce as many cache misses as in a linked queue.

Also, a bulk dequeue of many objects does not cost more than a dequeue of a simple

object.

The disadvantages:

• Size is fixed

• Having many rings costs more in terms of memory than a linked list queue. An empty

ring contains at least N pointers.

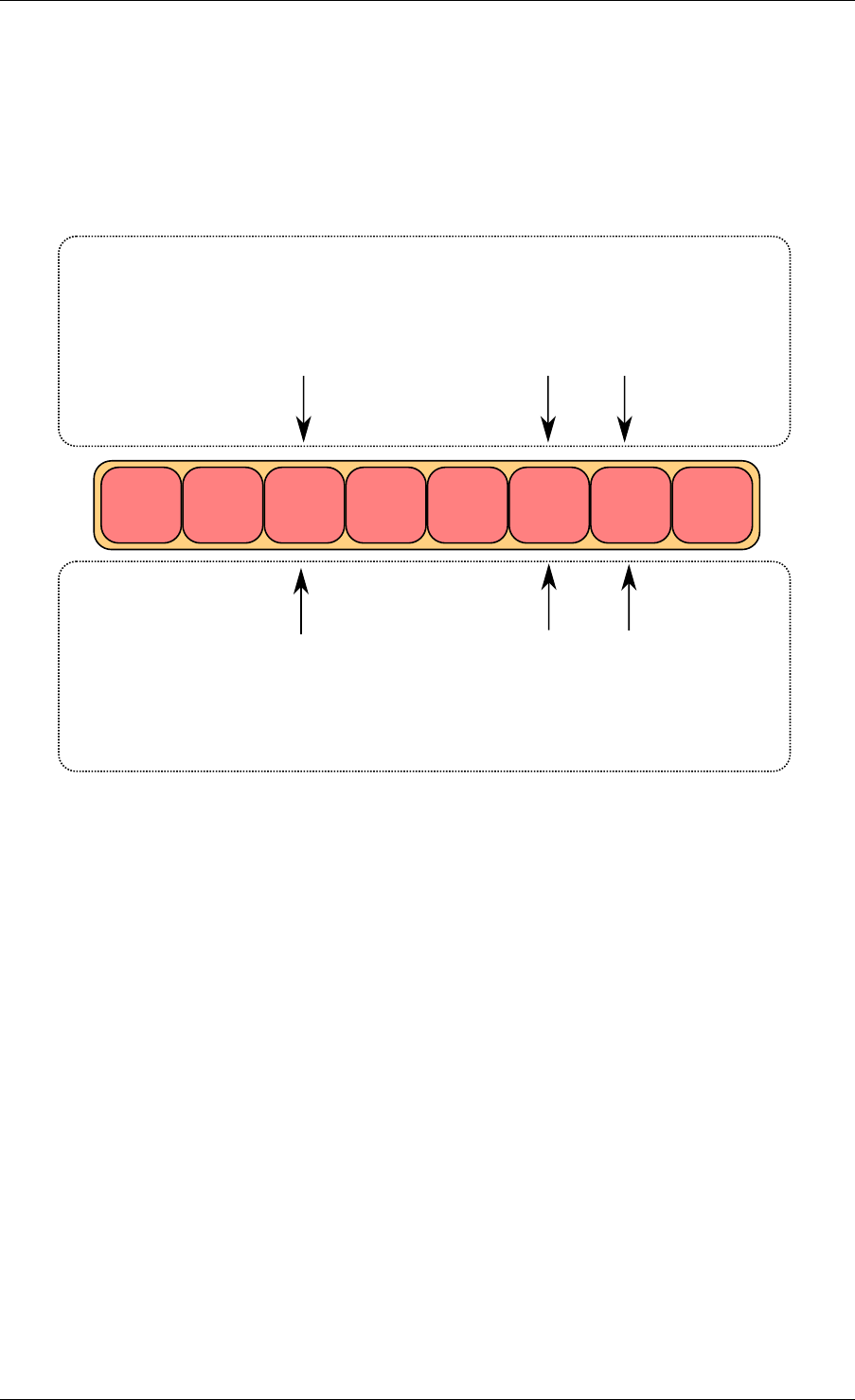

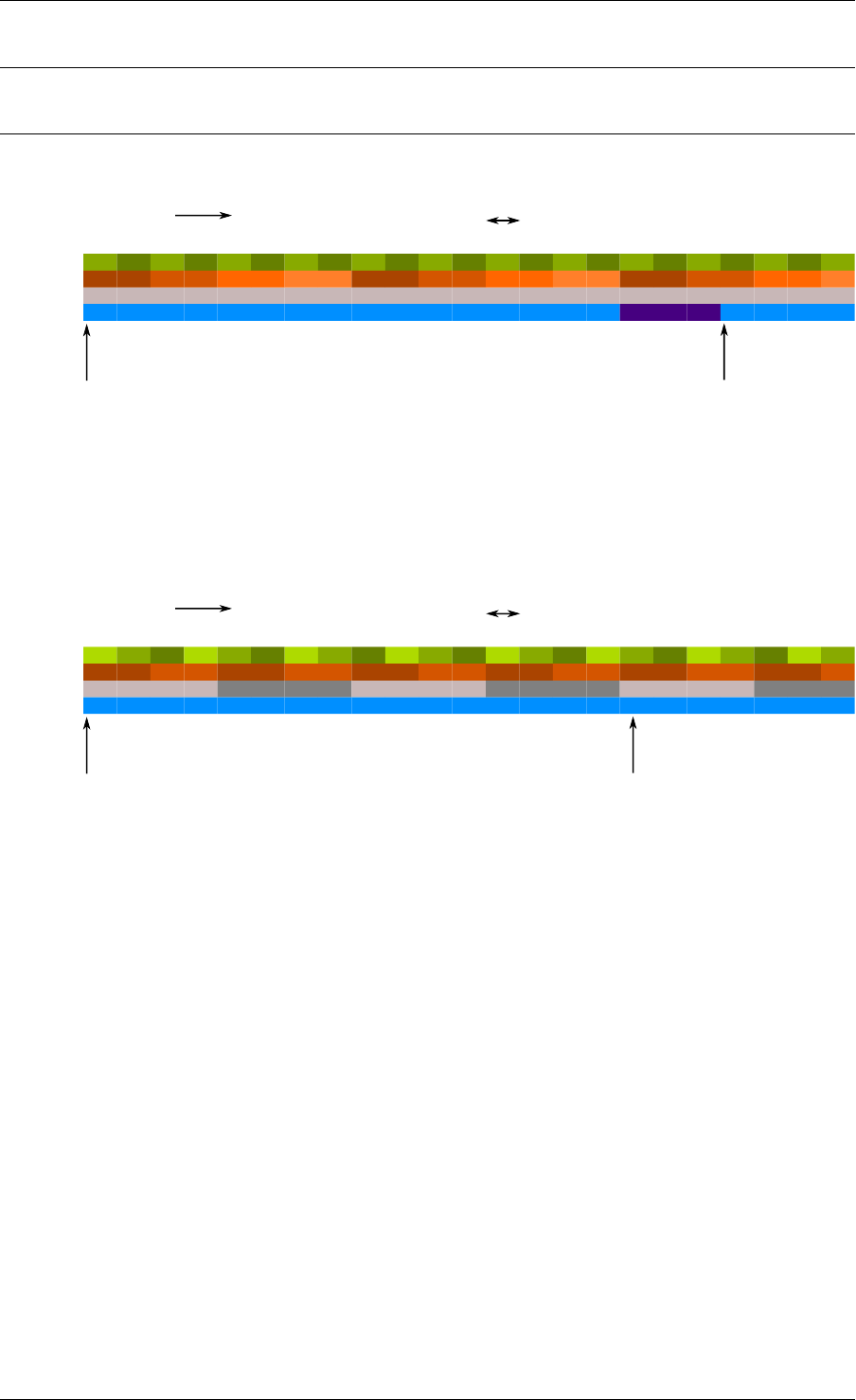

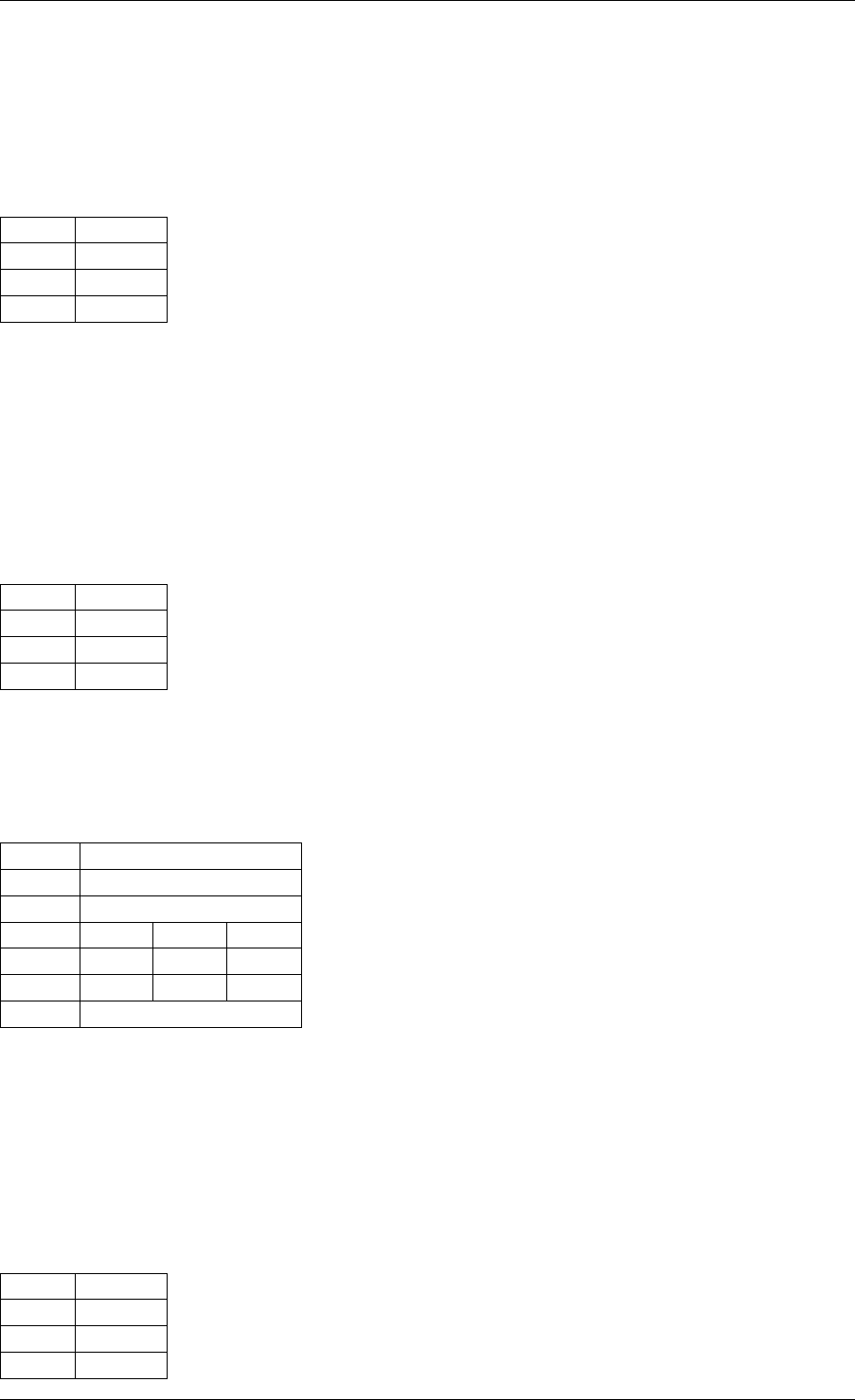

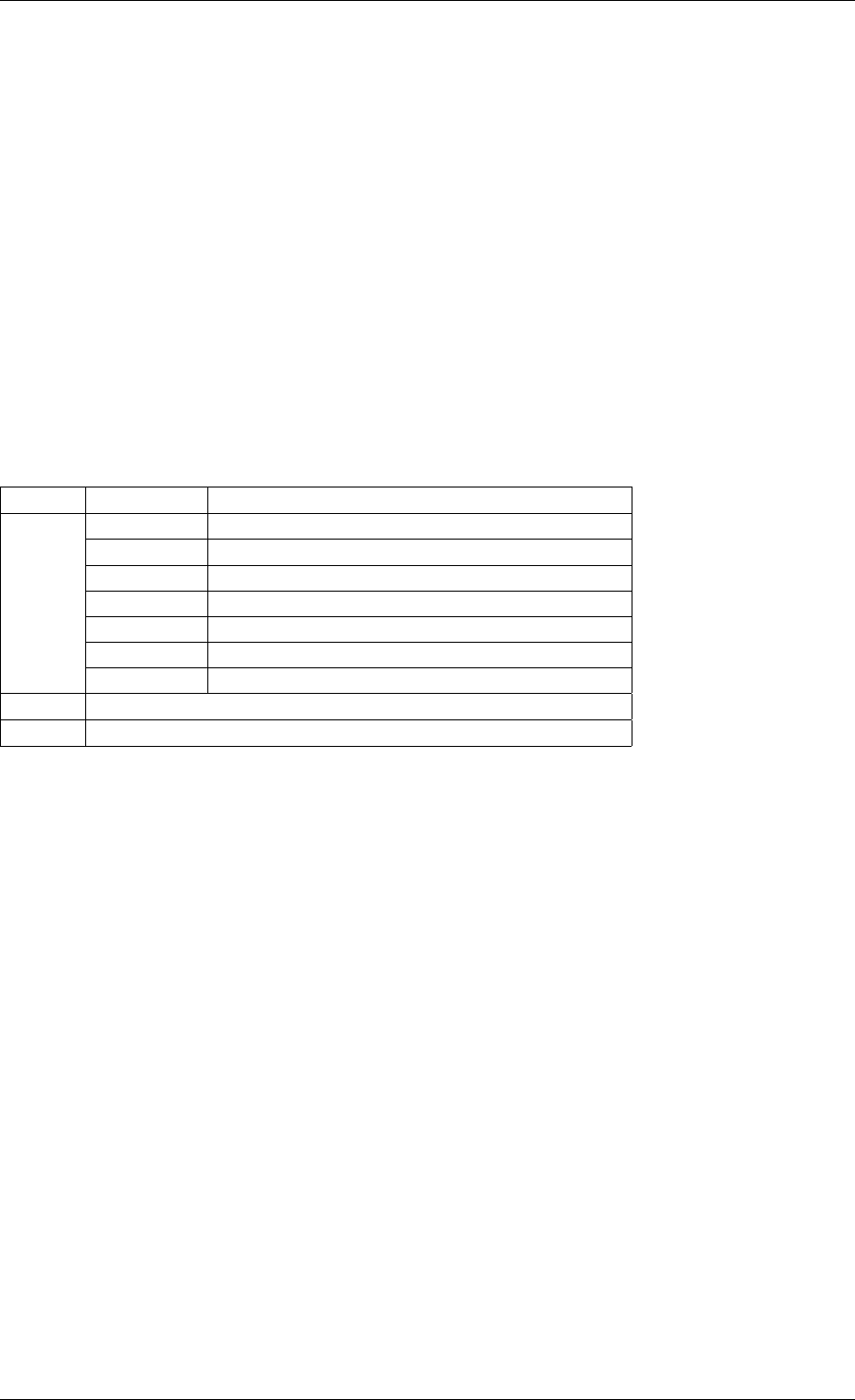

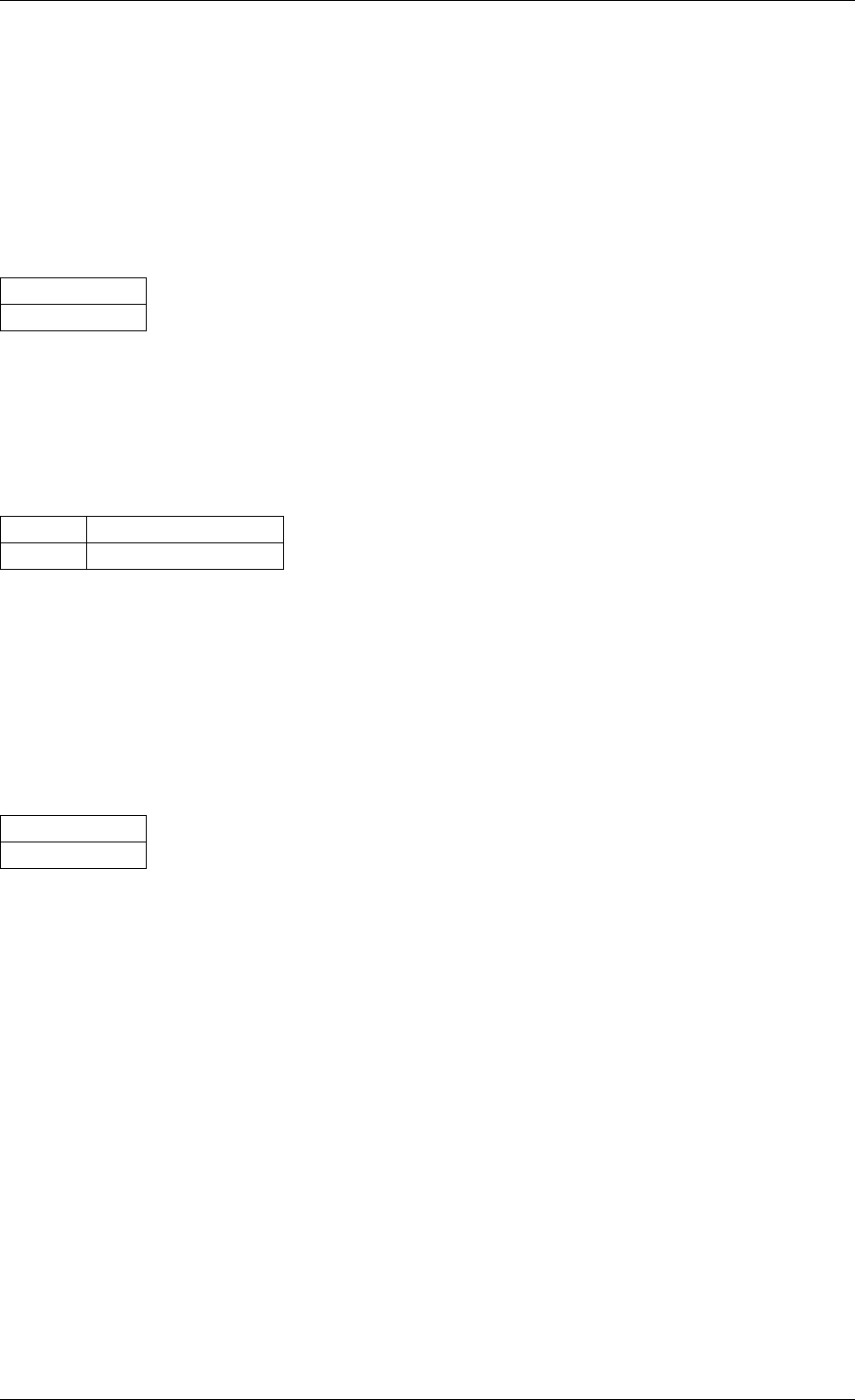

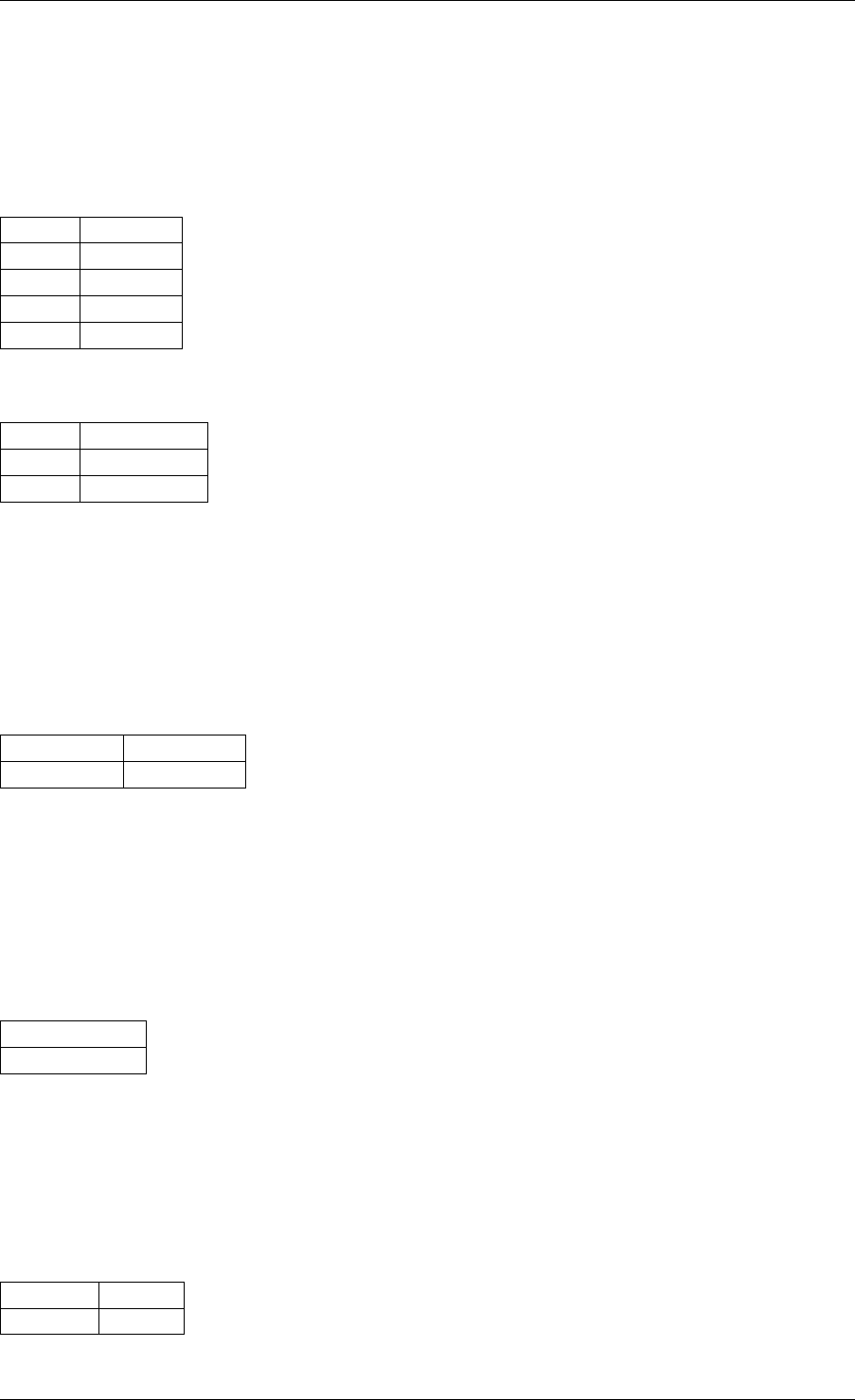

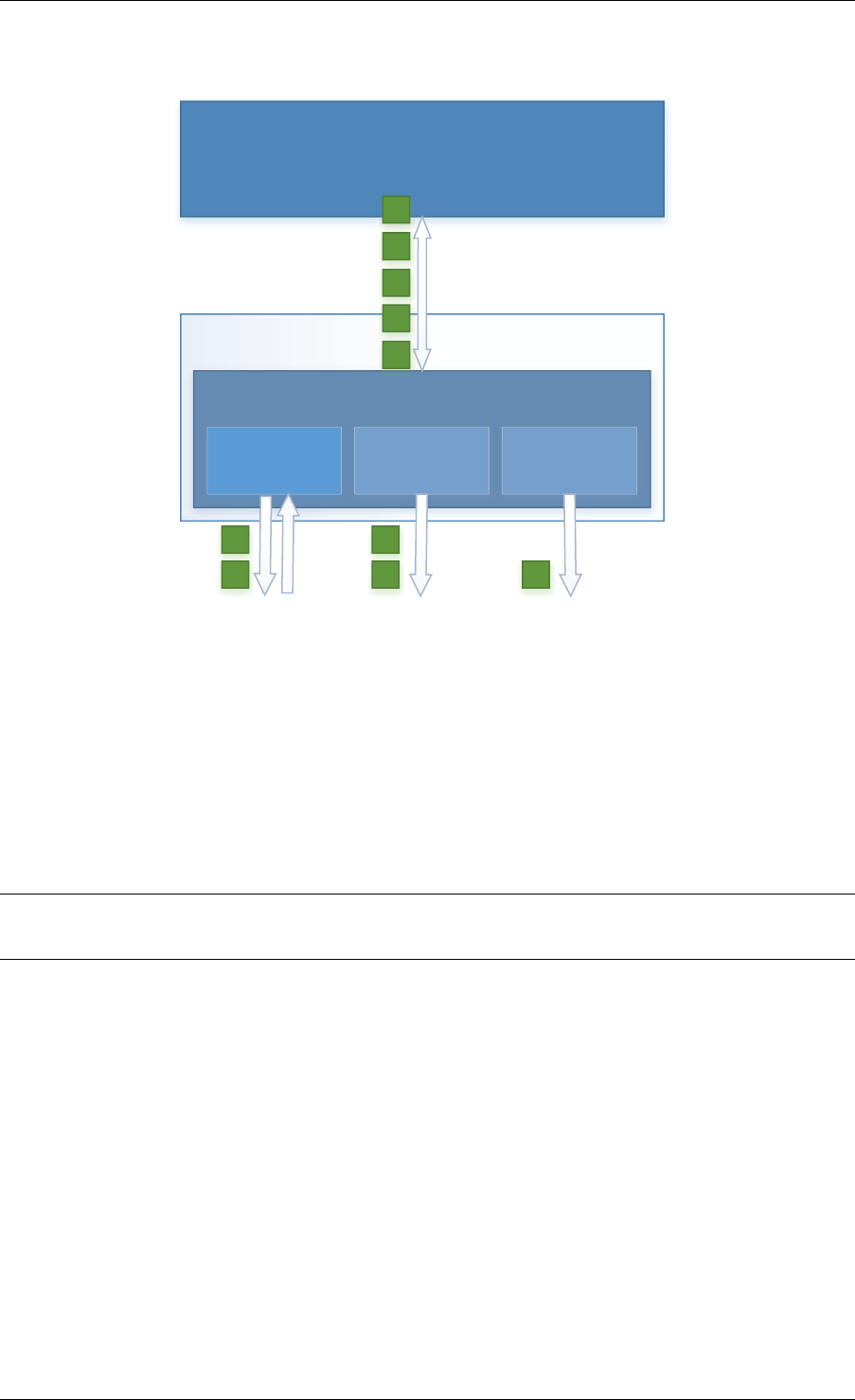

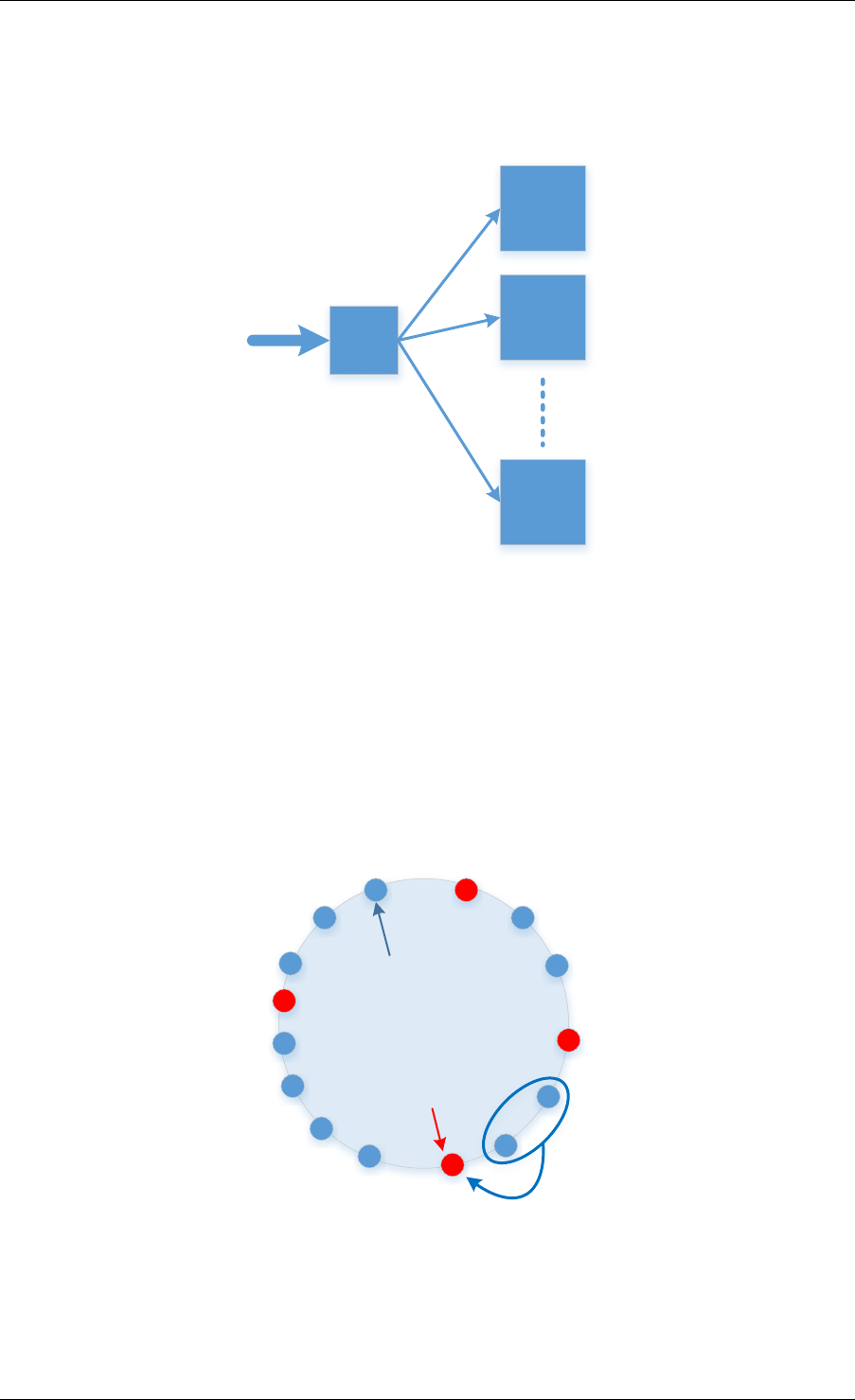

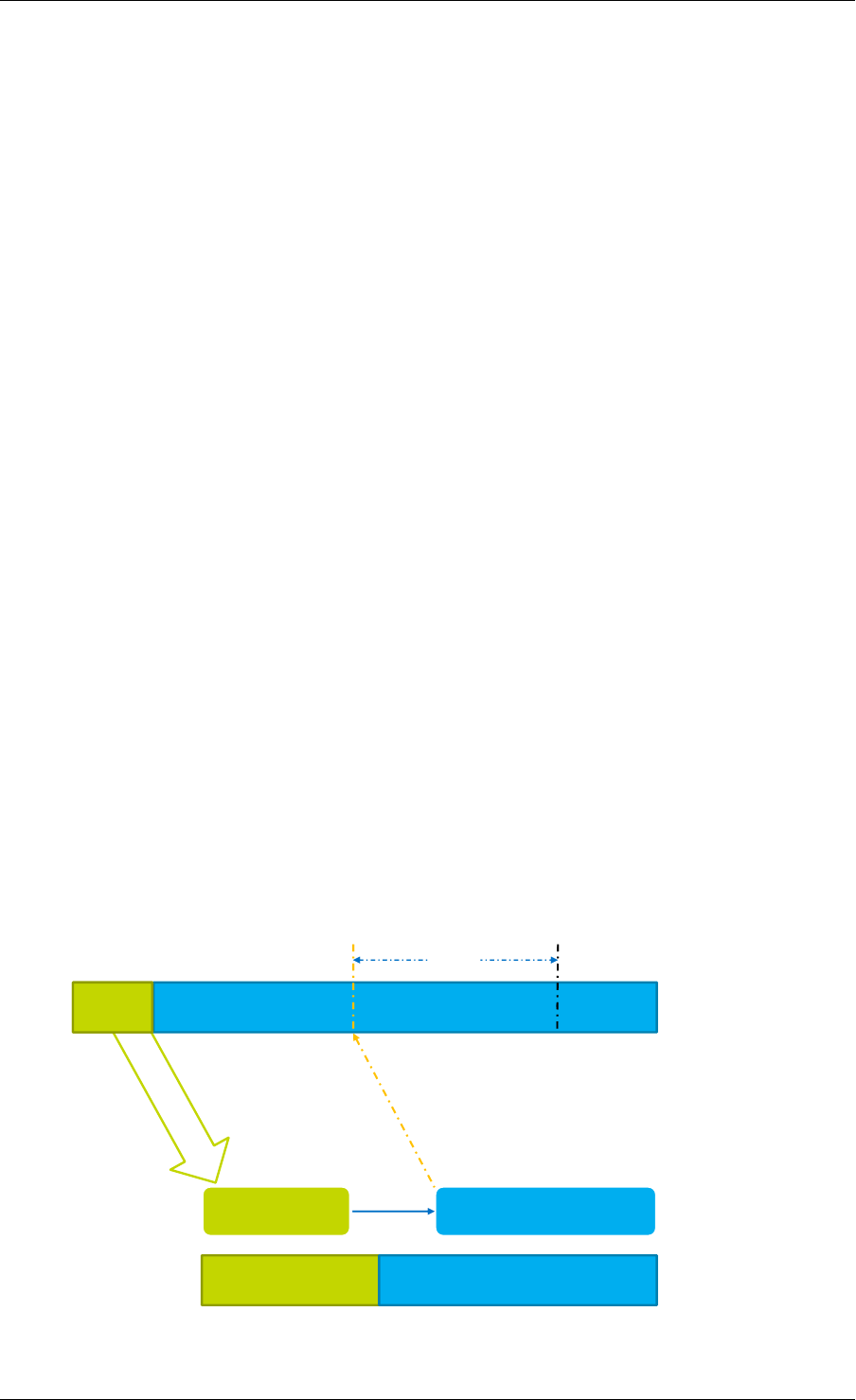

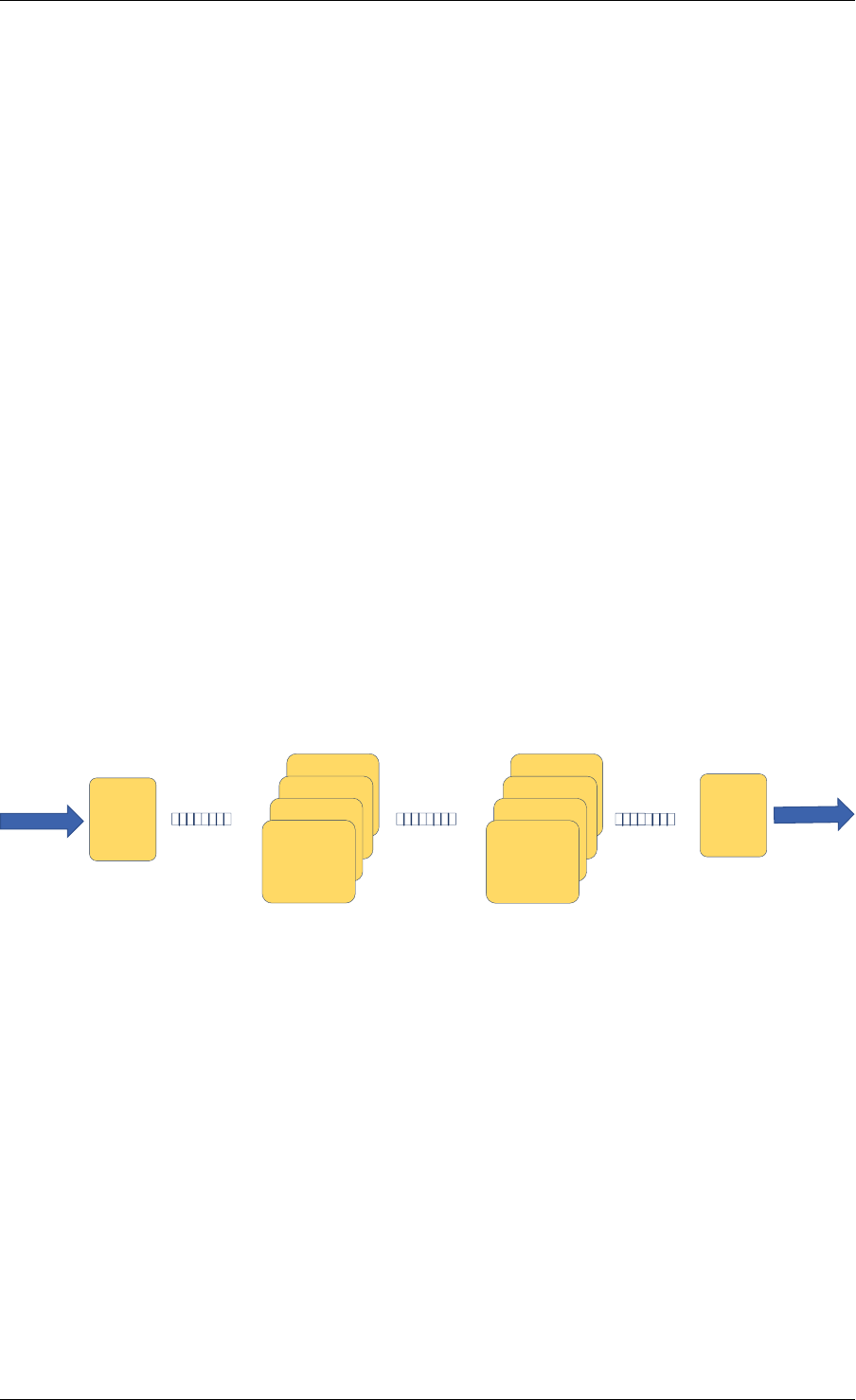

A simplified representation of a Ring is shown in with consumer and producer head and tail

pointers to objects stored in the data structure.

24

Programmer’s Guide, Release 18.11.0-rc5

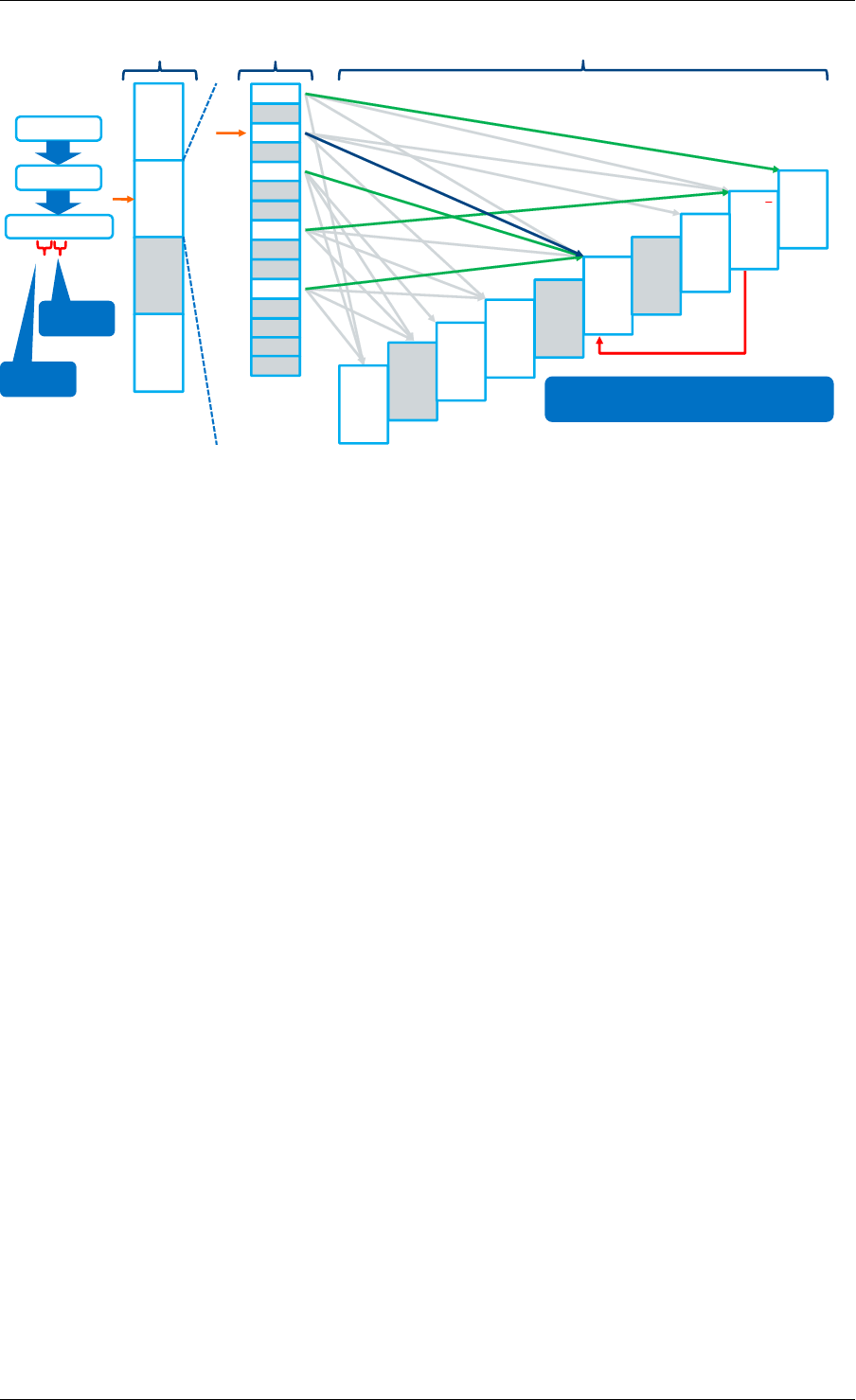

obj1 obj2 obj3

cons_head

cons_tail

prod_head

prod_tail

Fig. 5.1: Ring Structure

5.1 References for Ring Implementation in FreeBSD*

The following code was added in FreeBSD 8.0, and is used in some network device drivers (at

least in Intel drivers):

•bufring.h in FreeBSD

•bufring.c in FreeBSD

5.2 Lockless Ring Buffer in Linux*

The following is a link describing the Linux Lockless Ring Buffer Design.

5.3 Additional Features

5.3.1 Name

A ring is identified by a unique name. It is not possible to create two rings with the same name

(rte_ring_create() returns NULL if this is attempted).

5.4 Use Cases

Use cases for the Ring library include:

• Communication between applications in the DPDK

• Used by memory pool allocator

5.5 Anatomy of a Ring Buffer

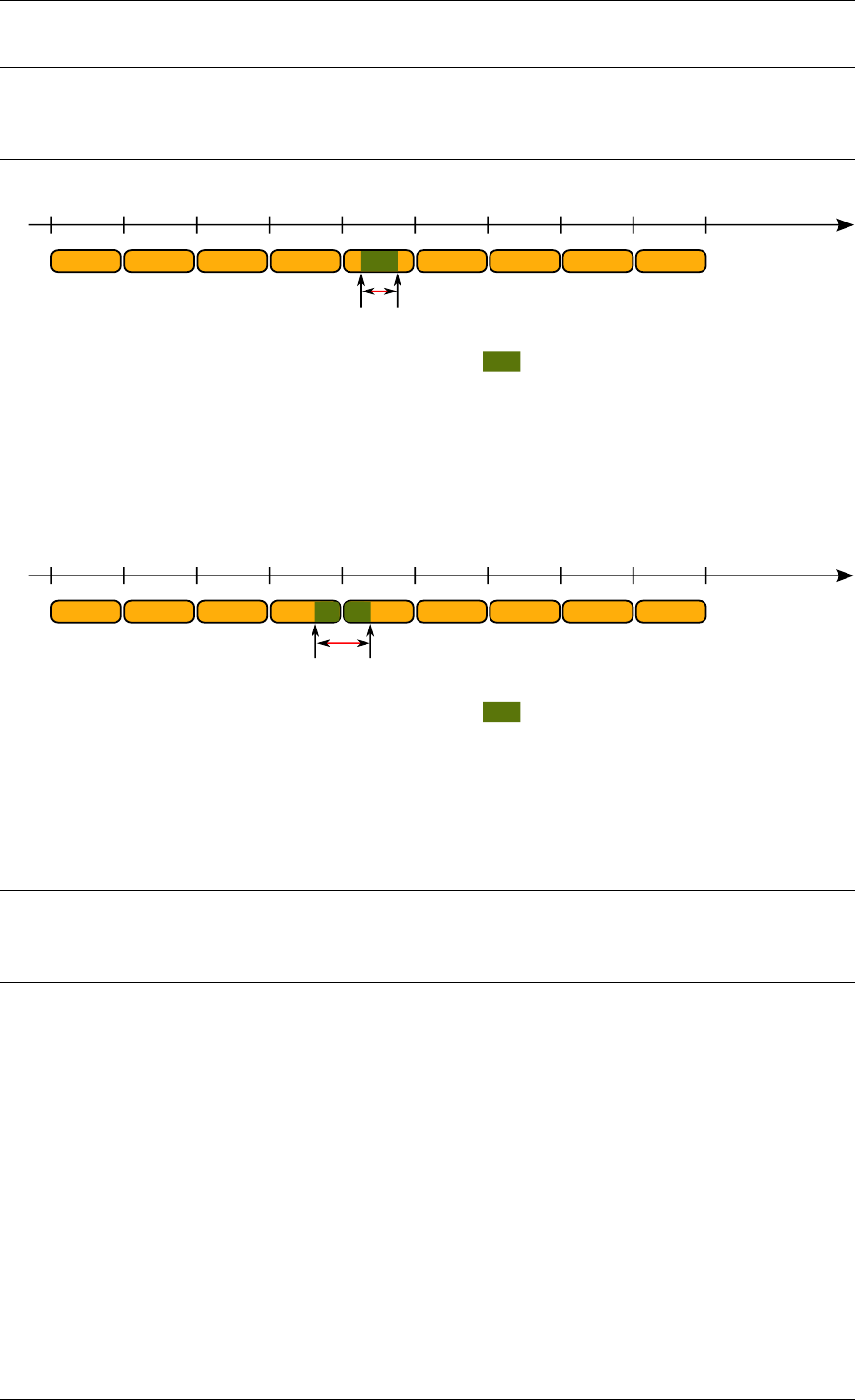

This section explains how a ring buffer operates. The ring structure is composed of two head

and tail couples; one is used by producers and one is used by the consumers. The figures of

the following sections refer to them as prod_head, prod_tail, cons_head and cons_tail.

5.1. References for Ring Implementation in FreeBSD* 25

Programmer’s Guide, Release 18.11.0-rc5

Each figure represents a simplified state of the ring, which is a circular buffer. The content

of the function local variables is represented on the top of the figure, and the content of ring

structure is represented on the bottom of the figure.

5.5.1 Single Producer Enqueue

This section explains what occurs when a producer adds an object to the ring. In this example,

only the producer head and tail (prod_head and prod_tail) are modified, and there is only one

producer.

The initial state is to have a prod_head and prod_tail pointing at the same location.

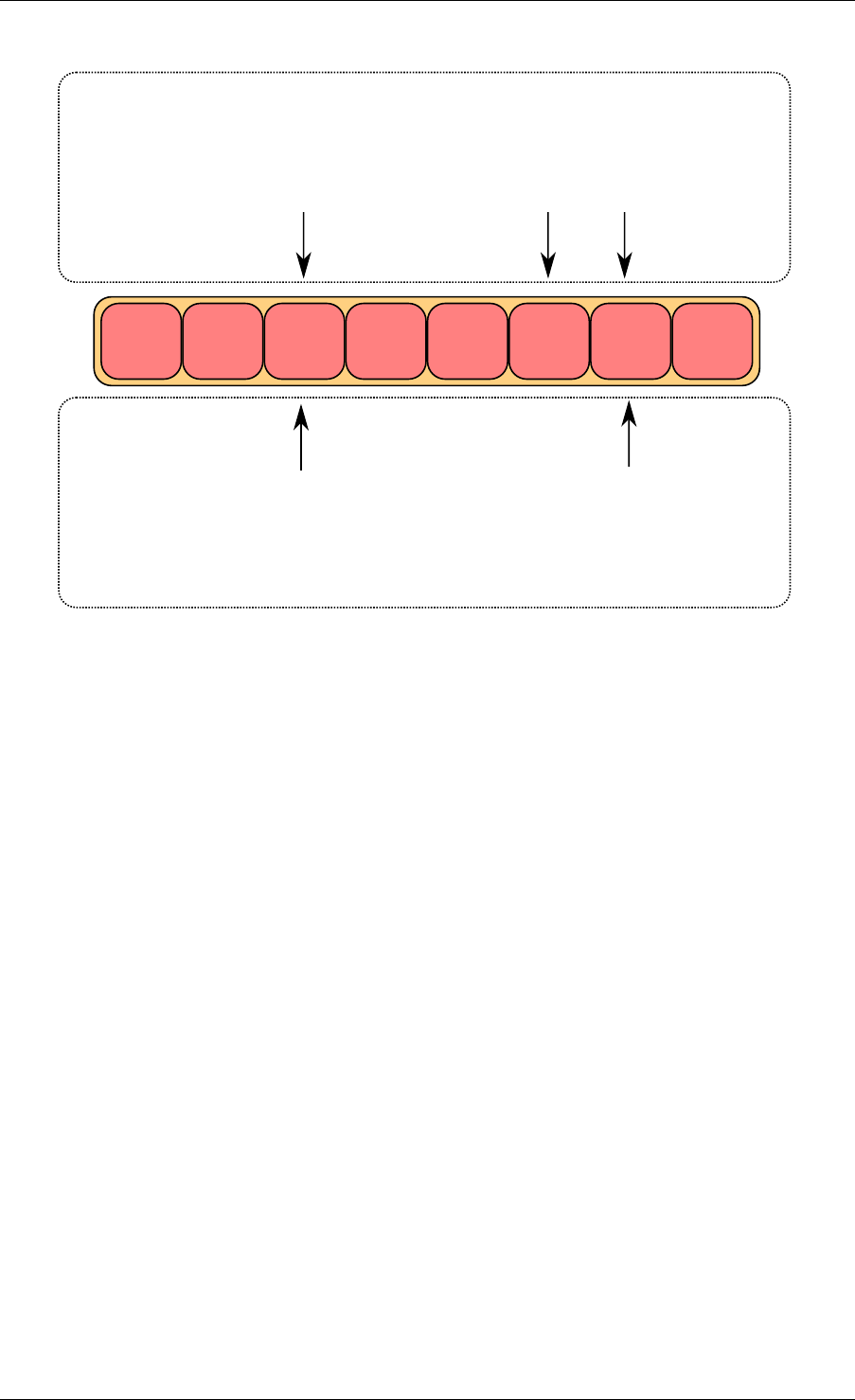

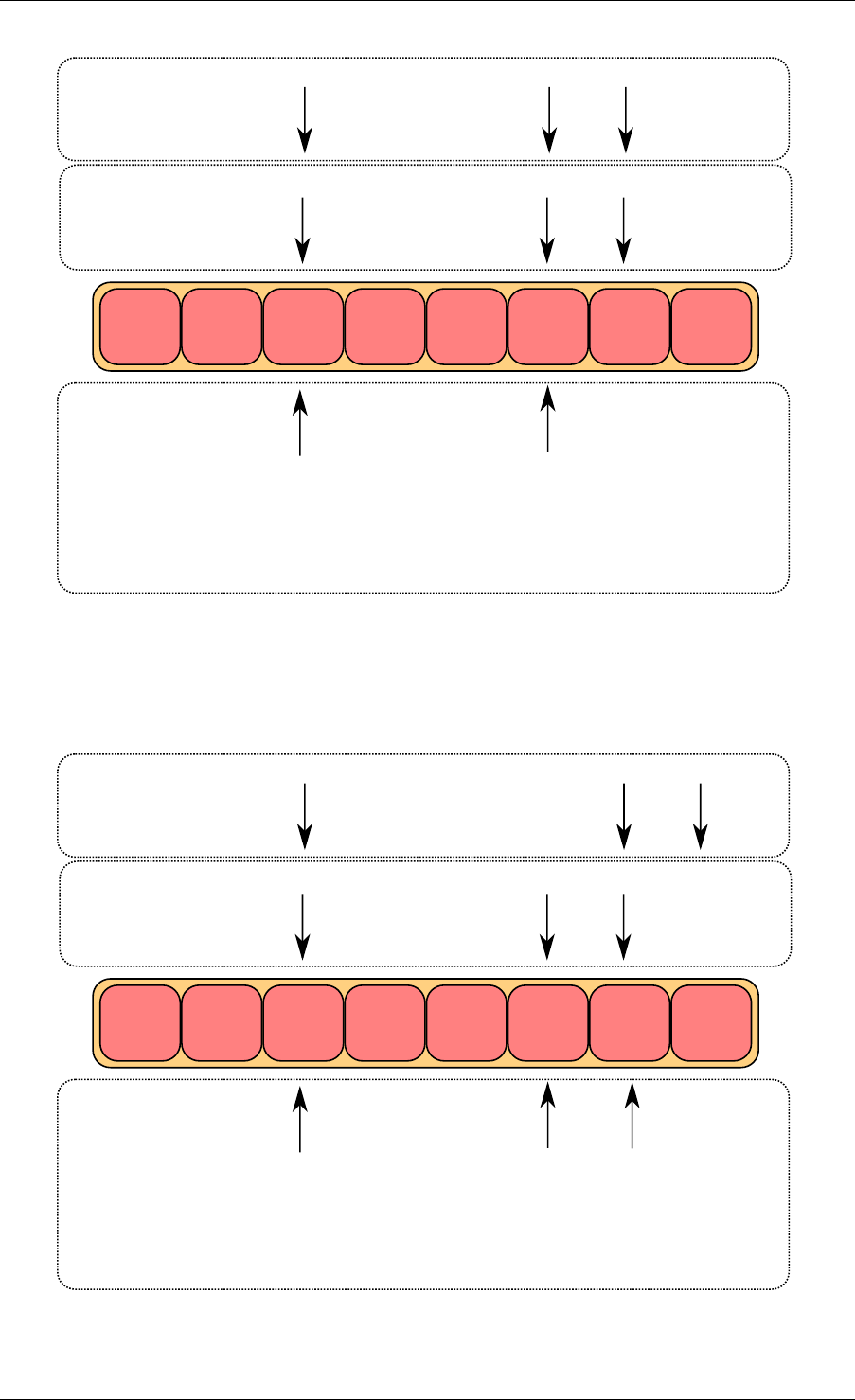

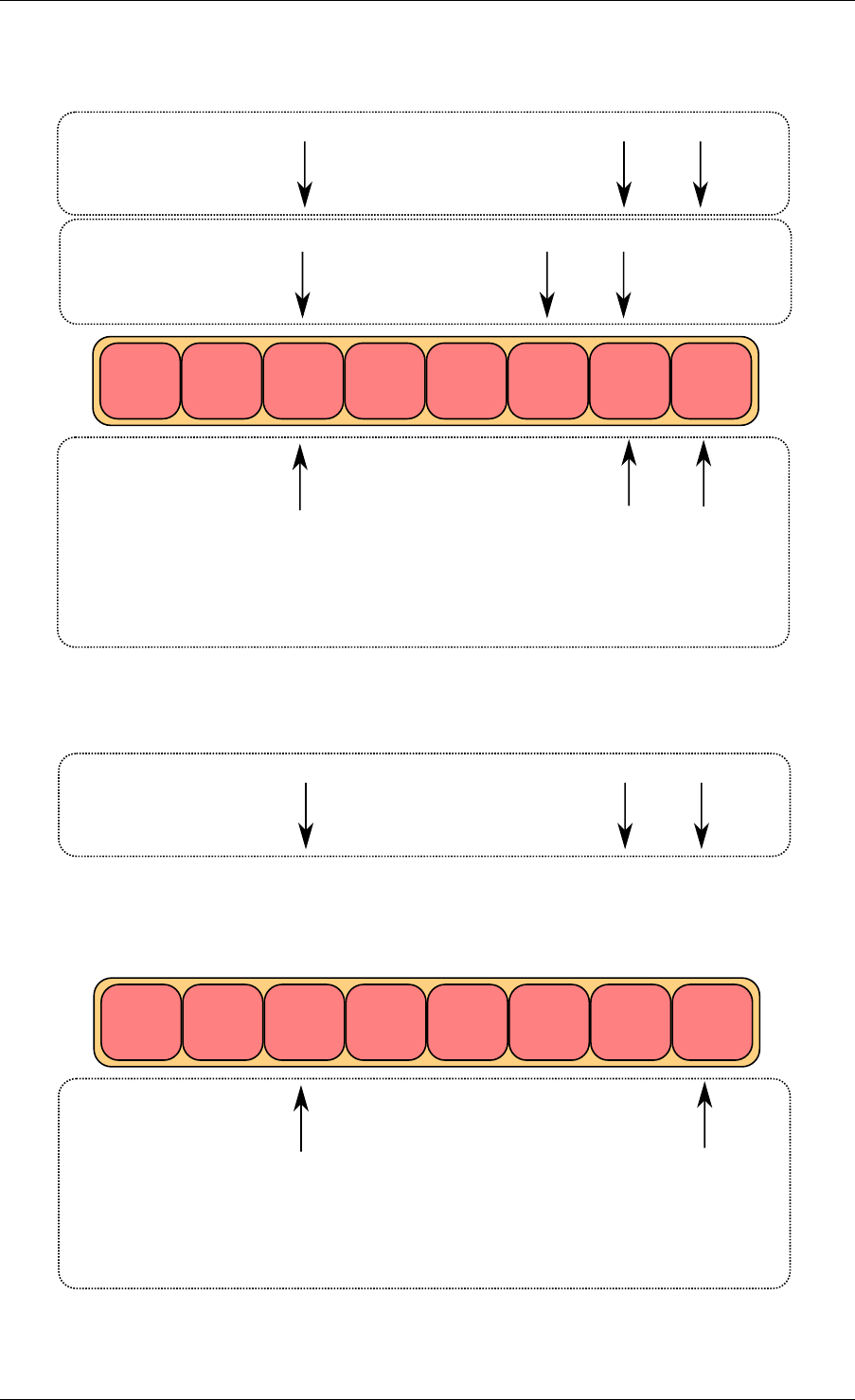

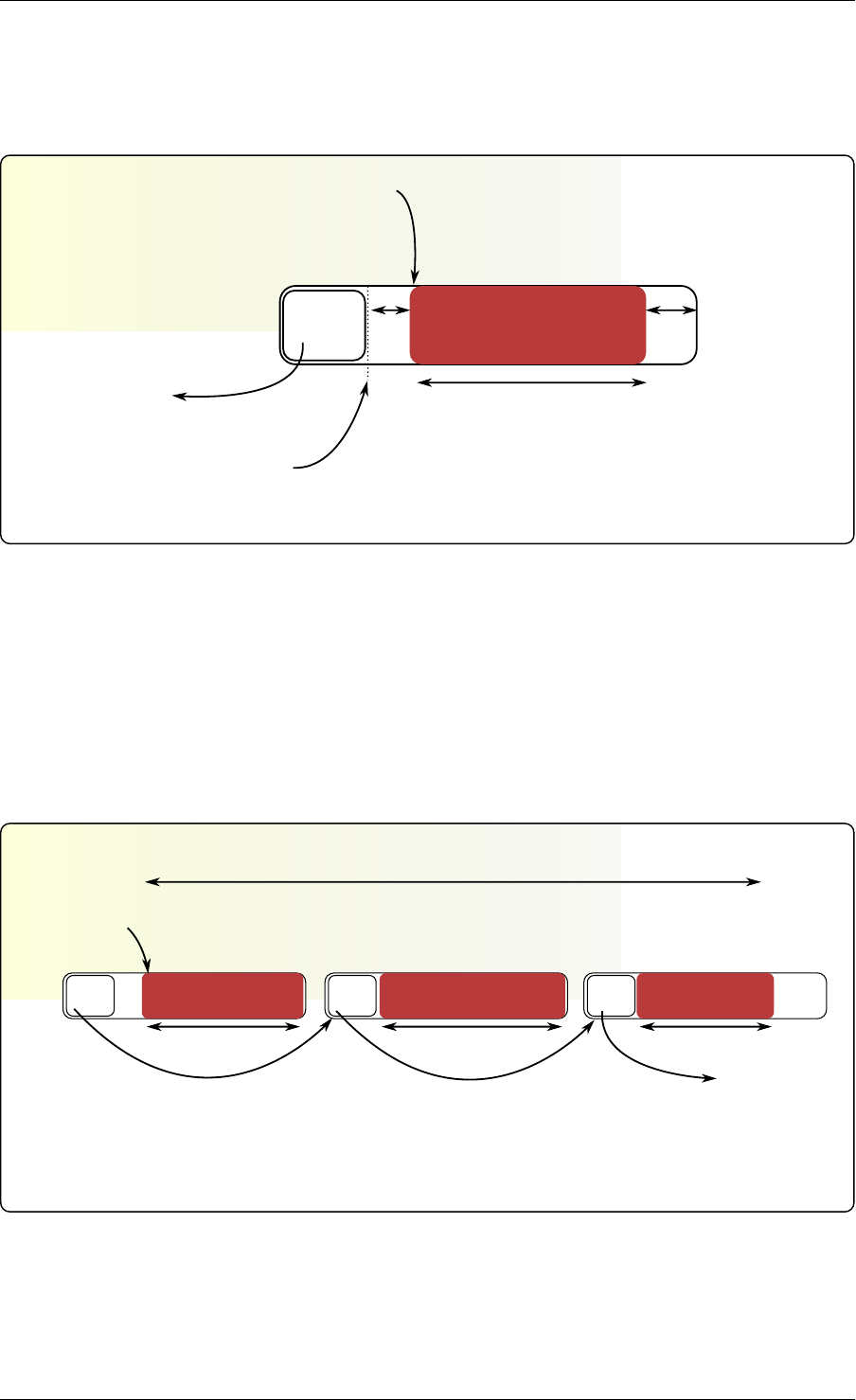

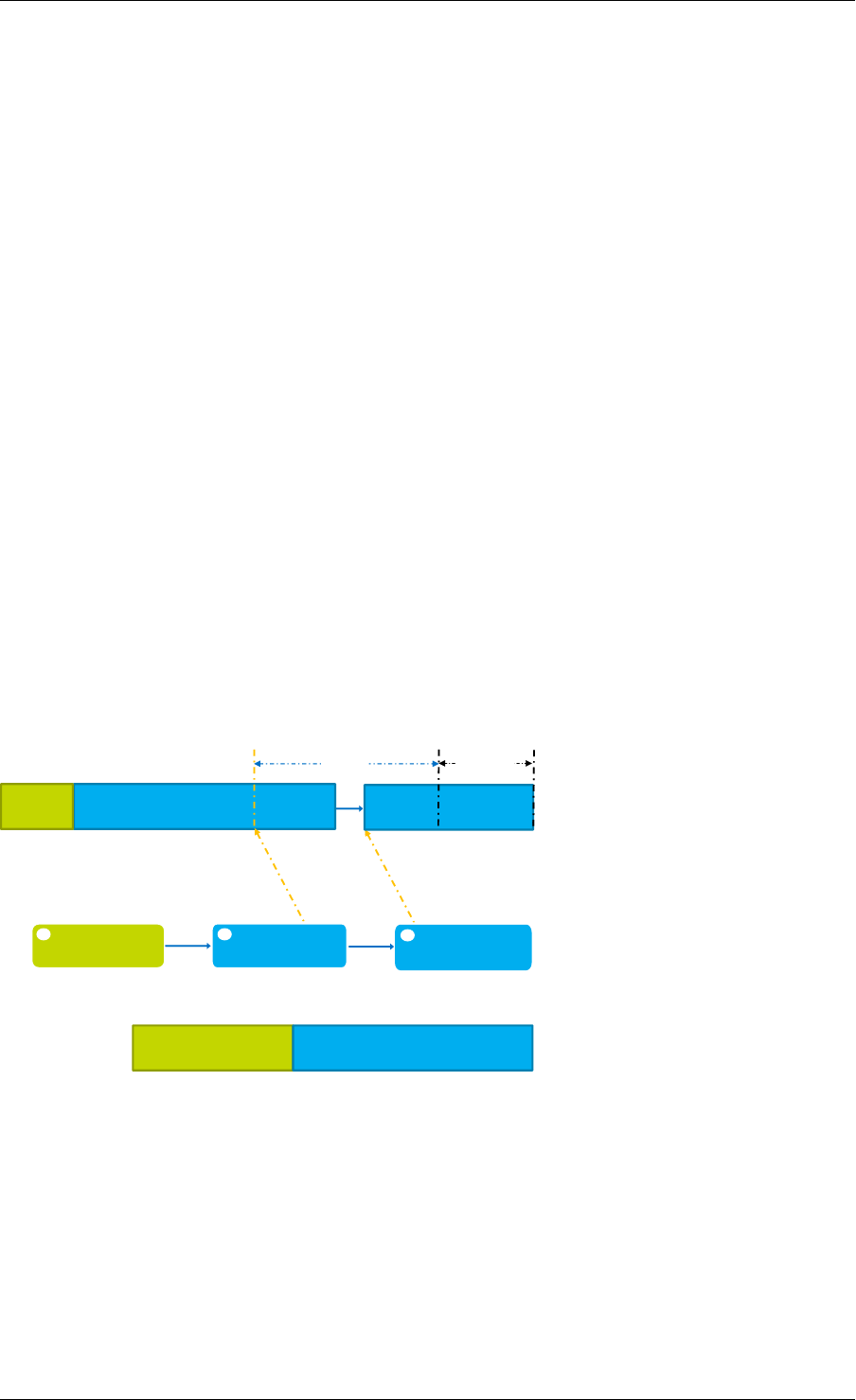

Enqueue First Step

First, ring->prod_head and ring->cons_tail are copied in local variables. The prod_next lo-

cal variable points to the next element of the table, or several elements after in case of bulk

enqueue.

If there is not enough room in the ring (this is detected by checking cons_tail), it returns an

error.

obj1 obj2 obj3

cons_head

cons_tail

prod_head

prod_tail

local variables

structure state

cons_tail prod_head prod_next

Fig. 5.2: Enqueue first step

5.5. Anatomy of a Ring Buffer 26

Programmer’s Guide, Release 18.11.0-rc5

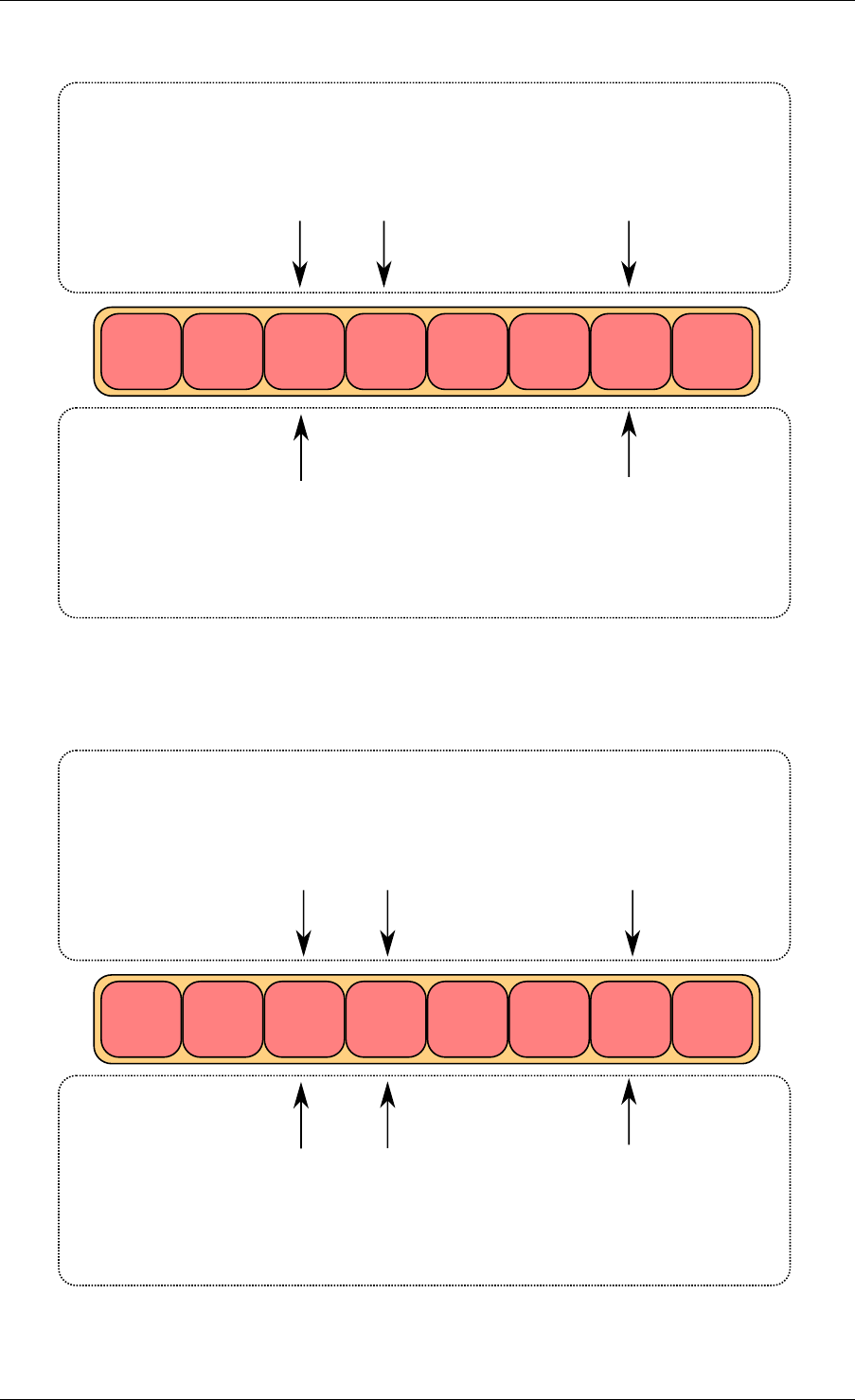

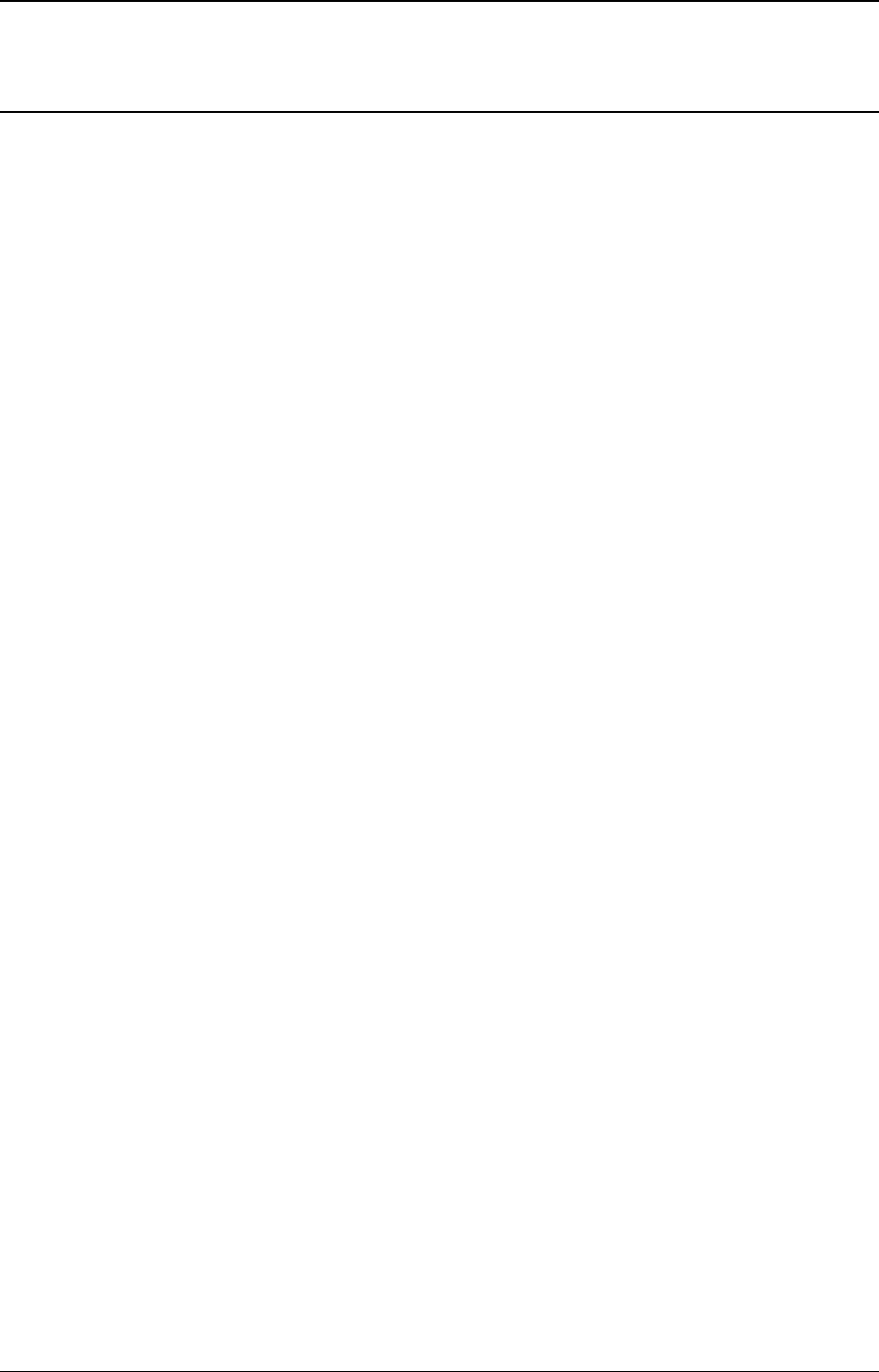

Enqueue Second Step

The second step is to modify ring->prod_head in ring structure to point to the same location

as prod_next.

A pointer to the added object is copied in the ring (obj4).

obj1 obj2 obj3

cons_head

cons_tail

prod_head

prod_tail

local variables

structure state

cons_tail prod_head prod_next

obj4

Fig. 5.3: Enqueue second step

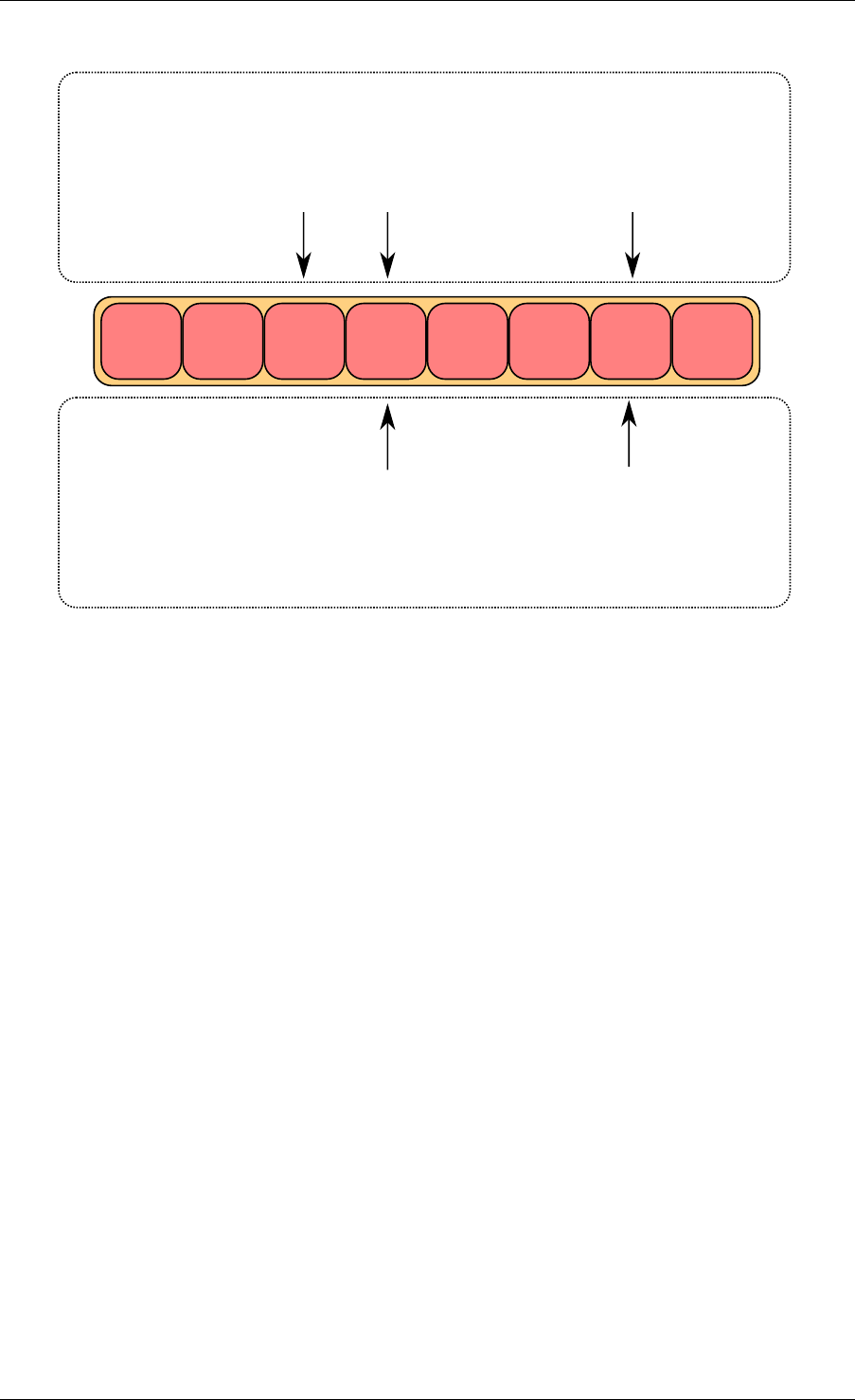

Enqueue Last Step

Once the object is added in the ring, ring->prod_tail in the ring structure is modified to point to

the same location as ring->prod_head. The enqueue operation is finished.

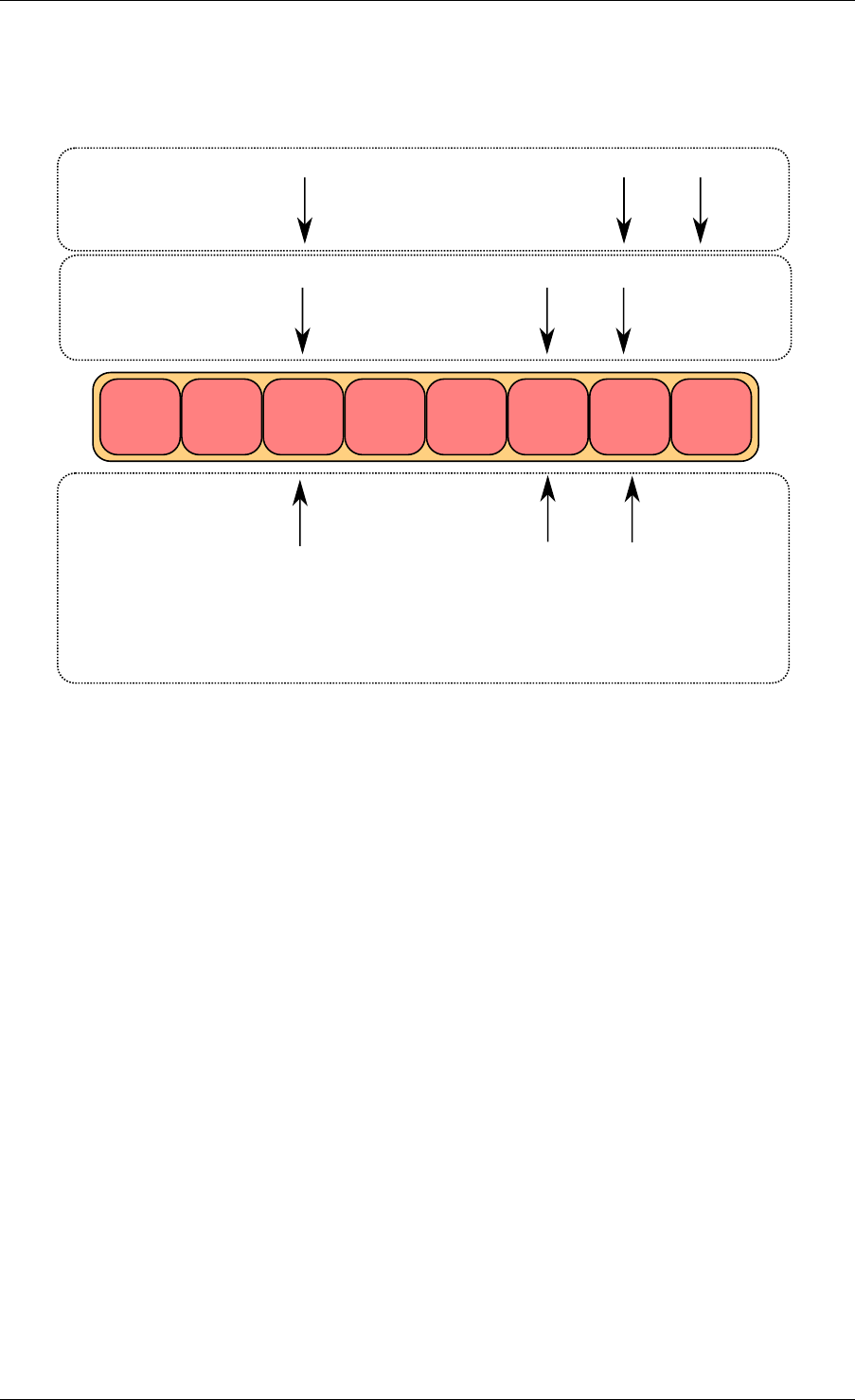

5.5.2 Single Consumer Dequeue

This section explains what occurs when a consumer dequeues an object from the ring. In this

example, only the consumer head and tail (cons_head and cons_tail) are modified and there

is only one consumer.

The initial state is to have a cons_head and cons_tail pointing at the same location.

Dequeue First Step

First, ring->cons_head and ring->prod_tail are copied in local variables. The cons_next local

variable points to the next element of the table, or several elements after in the case of bulk

5.5. Anatomy of a Ring Buffer 27

Programmer’s Guide, Release 18.11.0-rc5

obj1 obj2 obj3

cons_head

cons_tail prod_head

prod_tail

local variables

structure state

cons_tail prod_head prod_next

obj4

Fig. 5.4: Enqueue last step

dequeue.

If there are not enough objects in the ring (this is detected by checking prod_tail), it returns an

error.

Dequeue Second Step

The second step is to modify ring->cons_head in the ring structure to point to the same location

as cons_next.

The pointer to the dequeued object (obj1) is copied in the pointer given by the user.

Dequeue Last Step

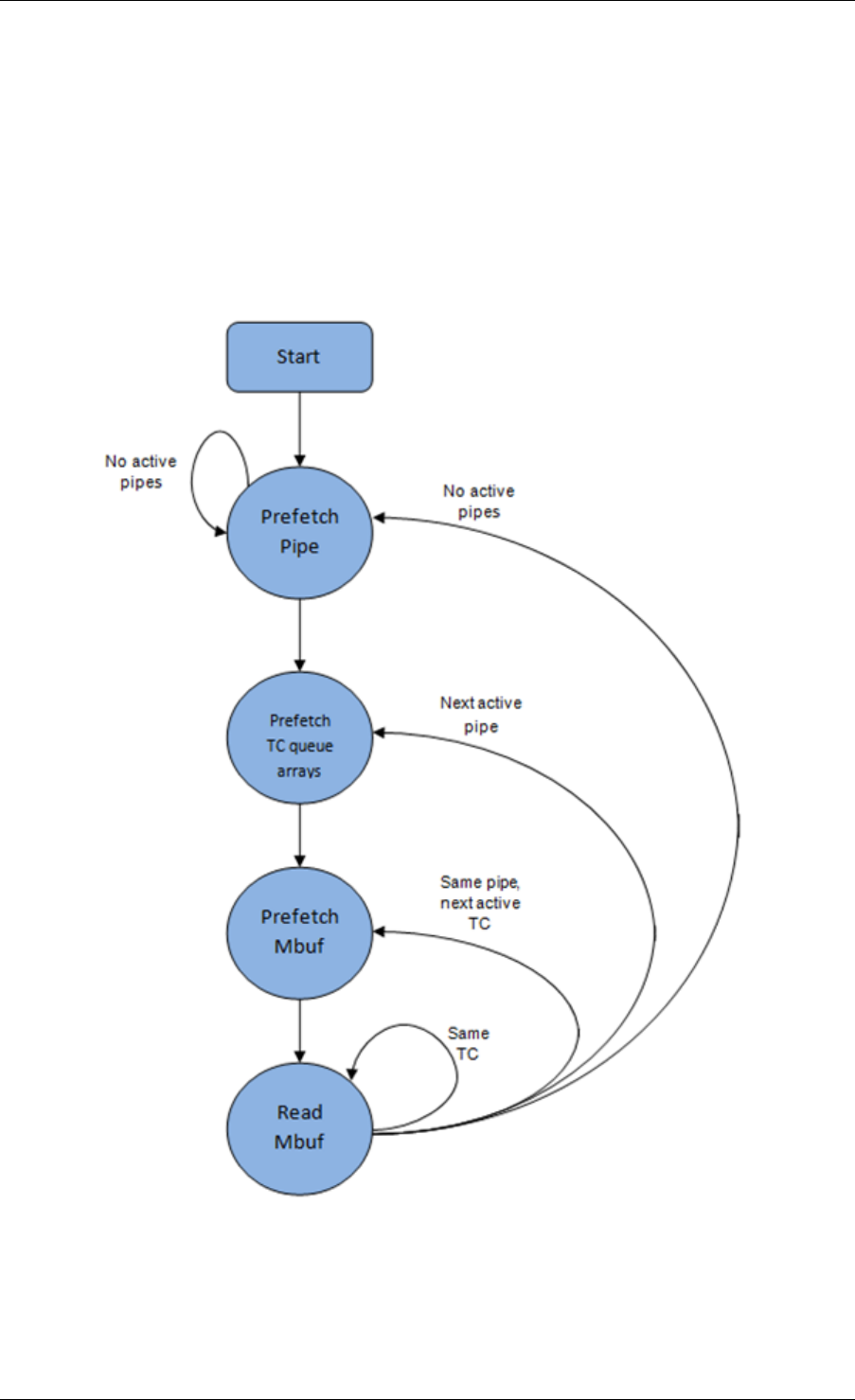

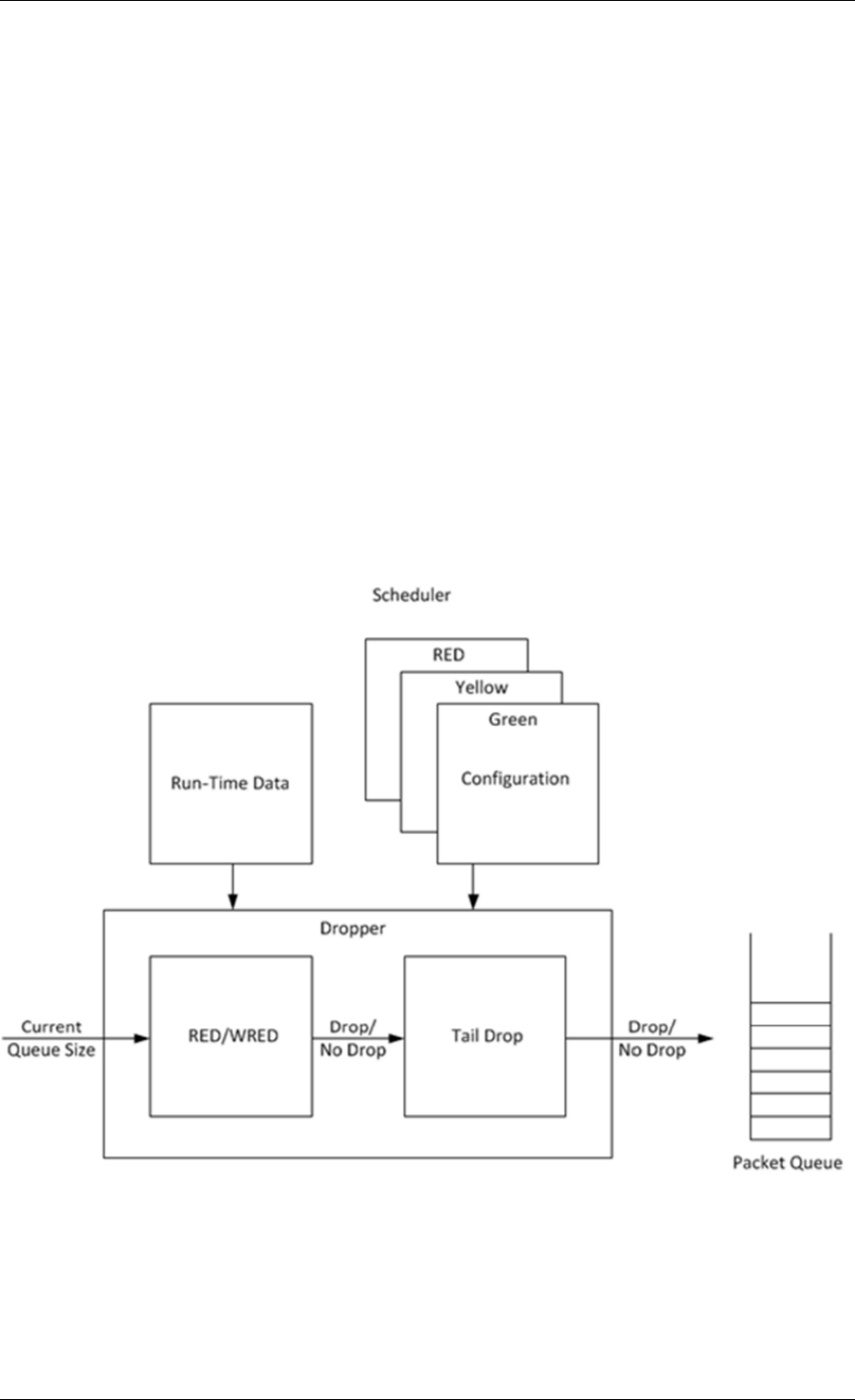

Finally, ring->cons_tail in the ring structure is modified to point to the same location as ring-