Zynq UltraScale+ MPSoC Embedded Design Methodology Guide (UG1228) Ug1228 Ultrafast

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 217 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Zynq UltraScale+ MPSoC Embedded Design Methodology Guide

- Revision History

- Table of Contents

- Ch. 1: Introduction

- Ch. 2: Processing System

- Ch. 3: System Software Considerations

- Ch. 4: Power Considerations

- Ch. 5: Programmable Logic

- Ch. 6: Memory

- Ch. 7: Resource Isolation and Partitioning

- Ch. 8: Security

- Ch. 9: Multimedia

- Ch. 10: Peripherals

- Appx. A: Additional Resources and Legal Notices

Zynq UltraScale+ MPSoC

Embedded Design

Methodology Guide

UG1228 (v1.0) March 31, 2017

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 3

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Table of Contents

Revision History . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2

Chapter 1: Introduction

Block Diagram . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

Vector Methodology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

Accessing Documentation and Training . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

Chapter 2: Processing System

Defining Your Processing Needs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

Processing System Methodology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

Heterogeneous Computing Concepts . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

Application Processing Unit (APU) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

APU Virtualization Support . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

Real-Time Processing Unit (RPU) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27

Interconnect . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

Interrupts . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 37

Workload Acceleration Using the PL . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 42

General-Purpose Computing Acceleration. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

Chapter 3: System Software Considerations

Defining Your System Software Needs. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 46

System Software Methodology. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 47

Boot Process Software. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

System Software Stacks. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 58

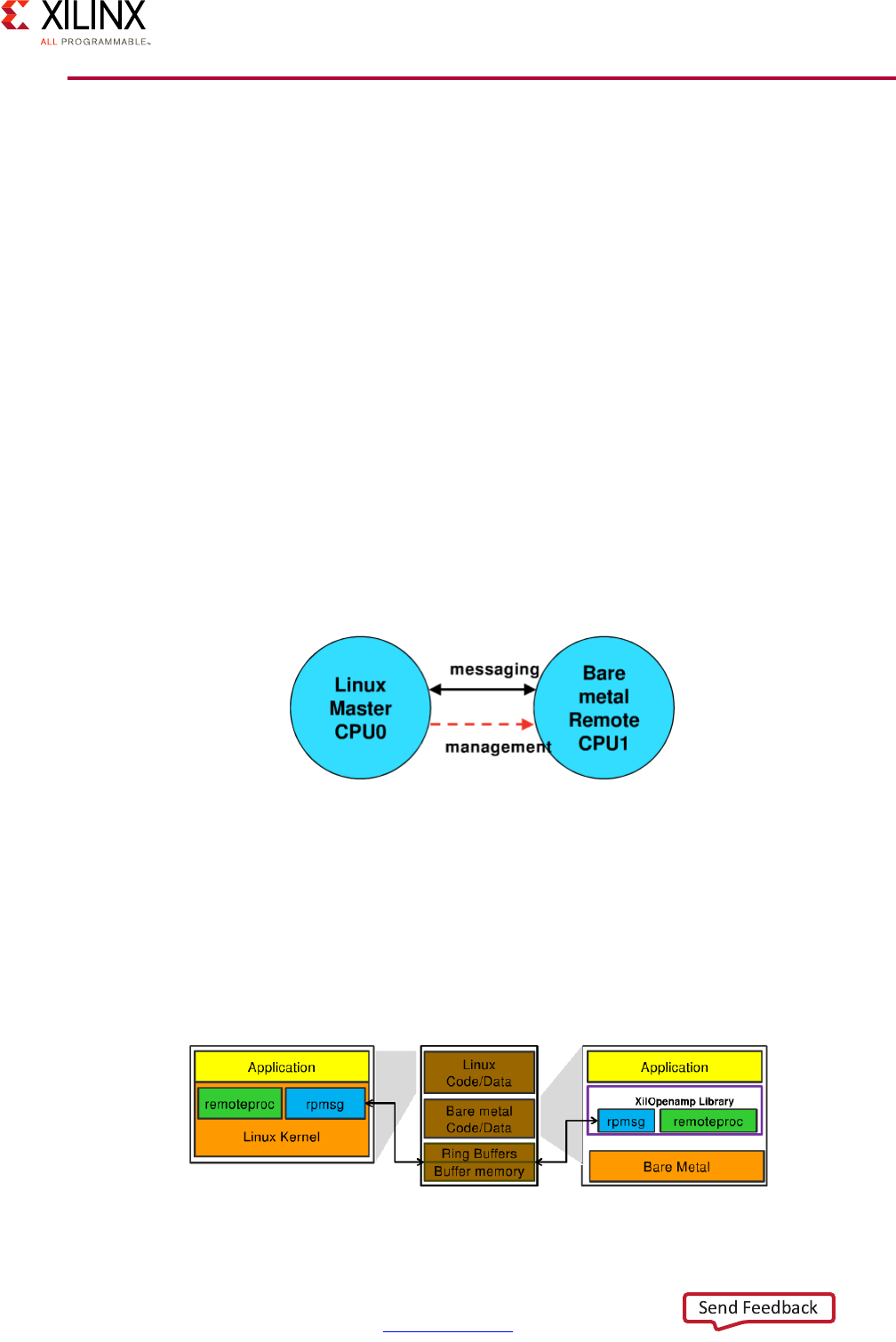

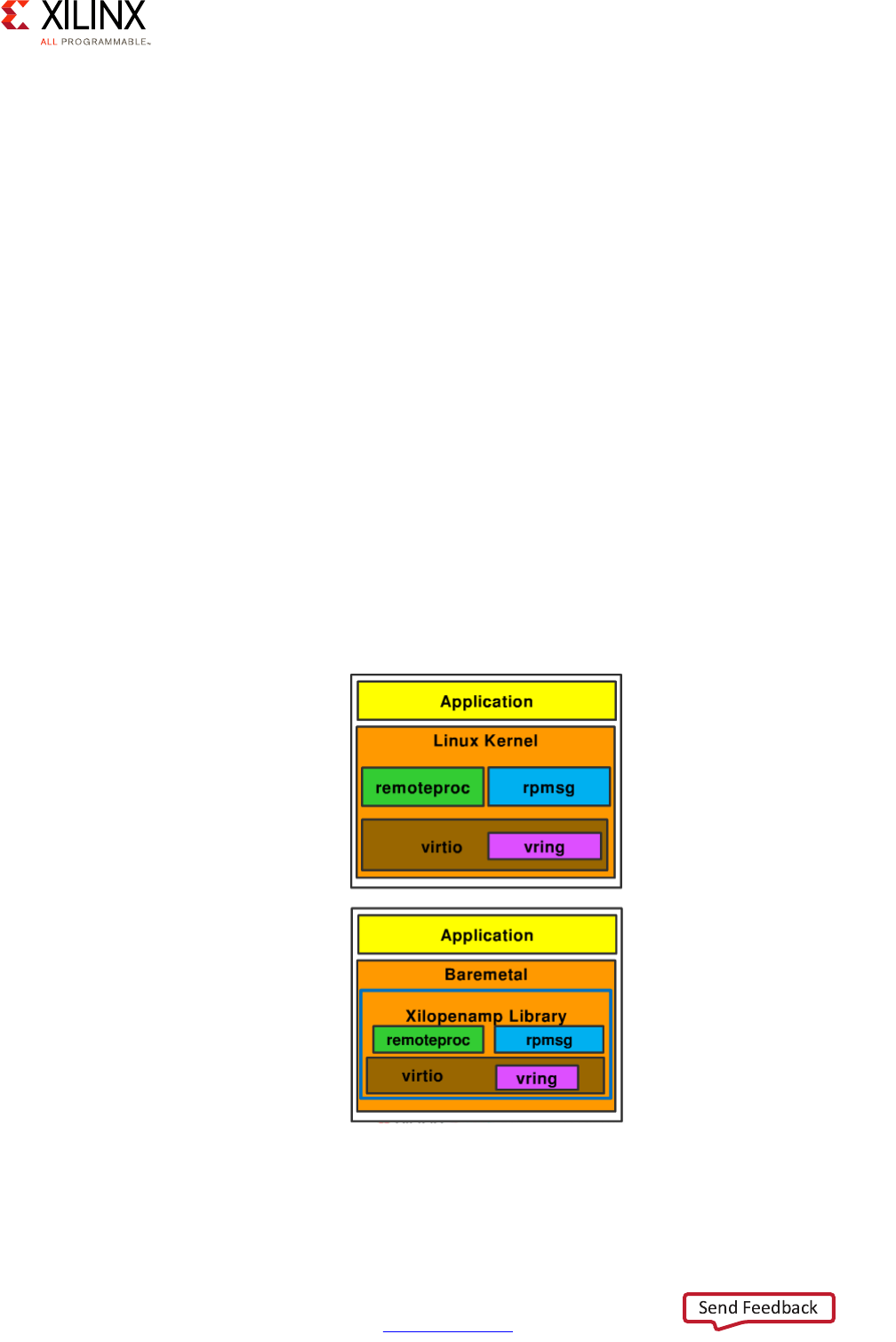

OpenAMP Framework . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 64

Xen Hypervisor . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 67

PMU Software . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 70

Software Development Tools . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 72

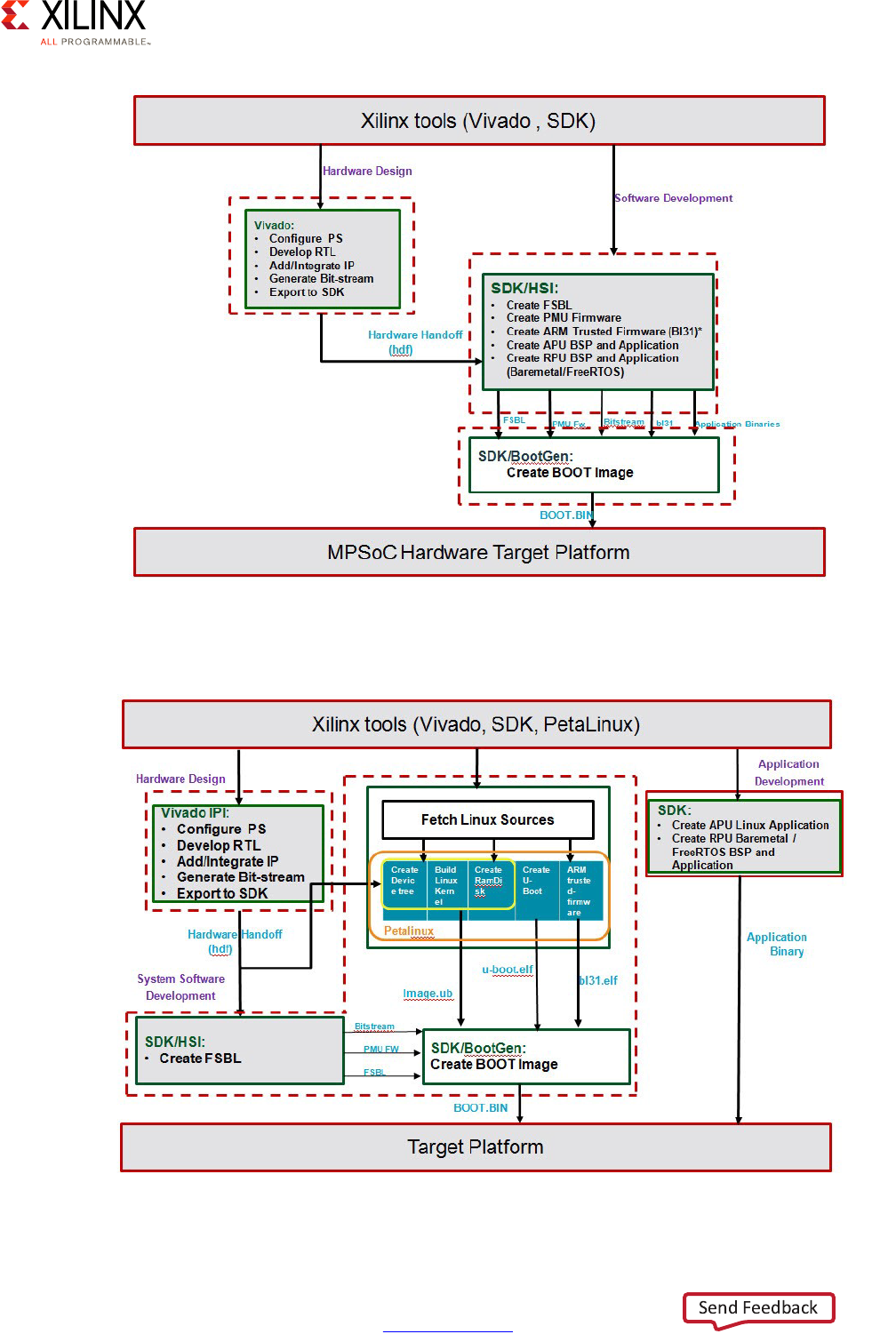

Developer Flow . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

Chapter 4: Power Considerations

Defining Your Power Needs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 80

Power Tuning Methodology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 4

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Four Major Power Domains . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 86

Power Islands and Power Gating . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 92

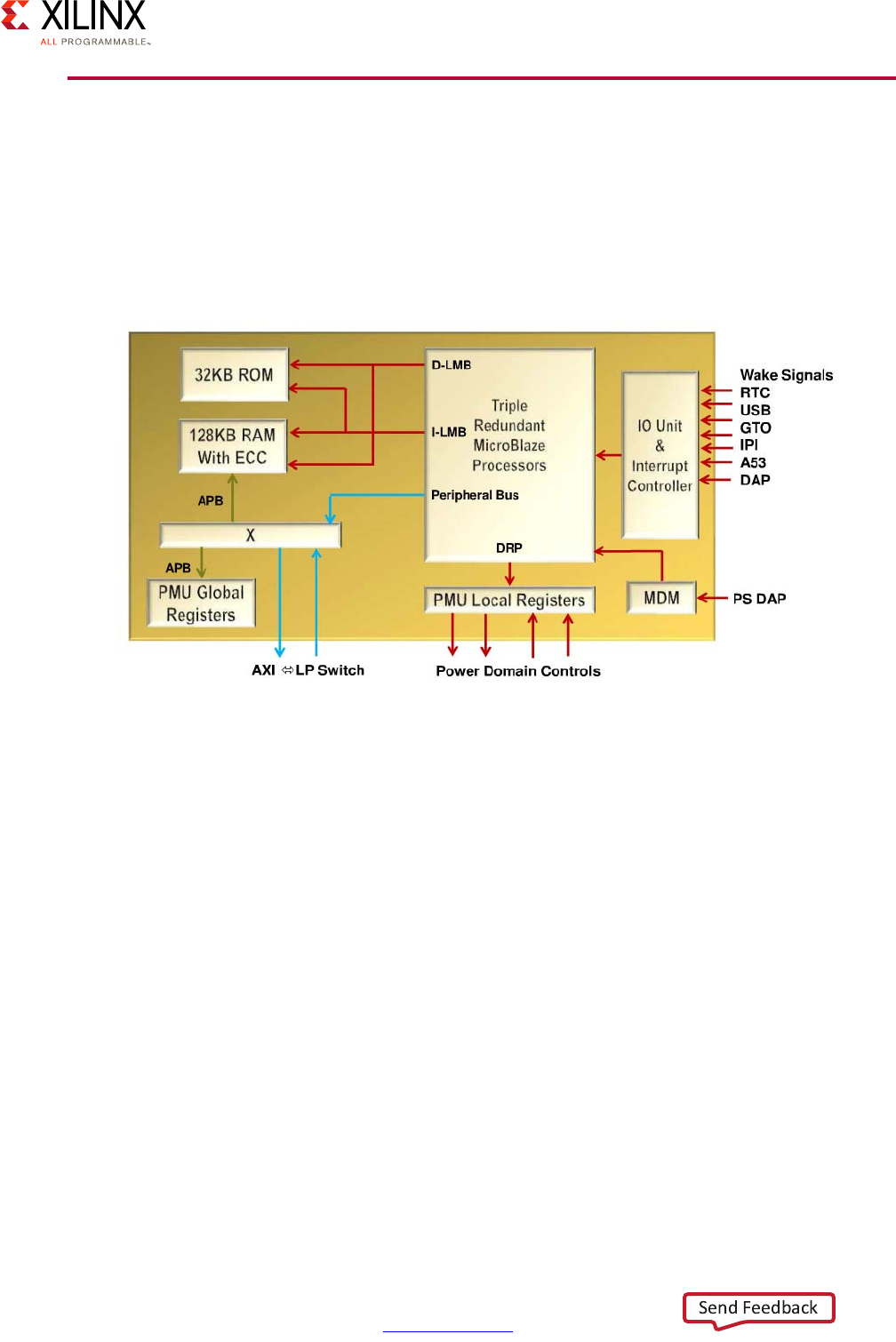

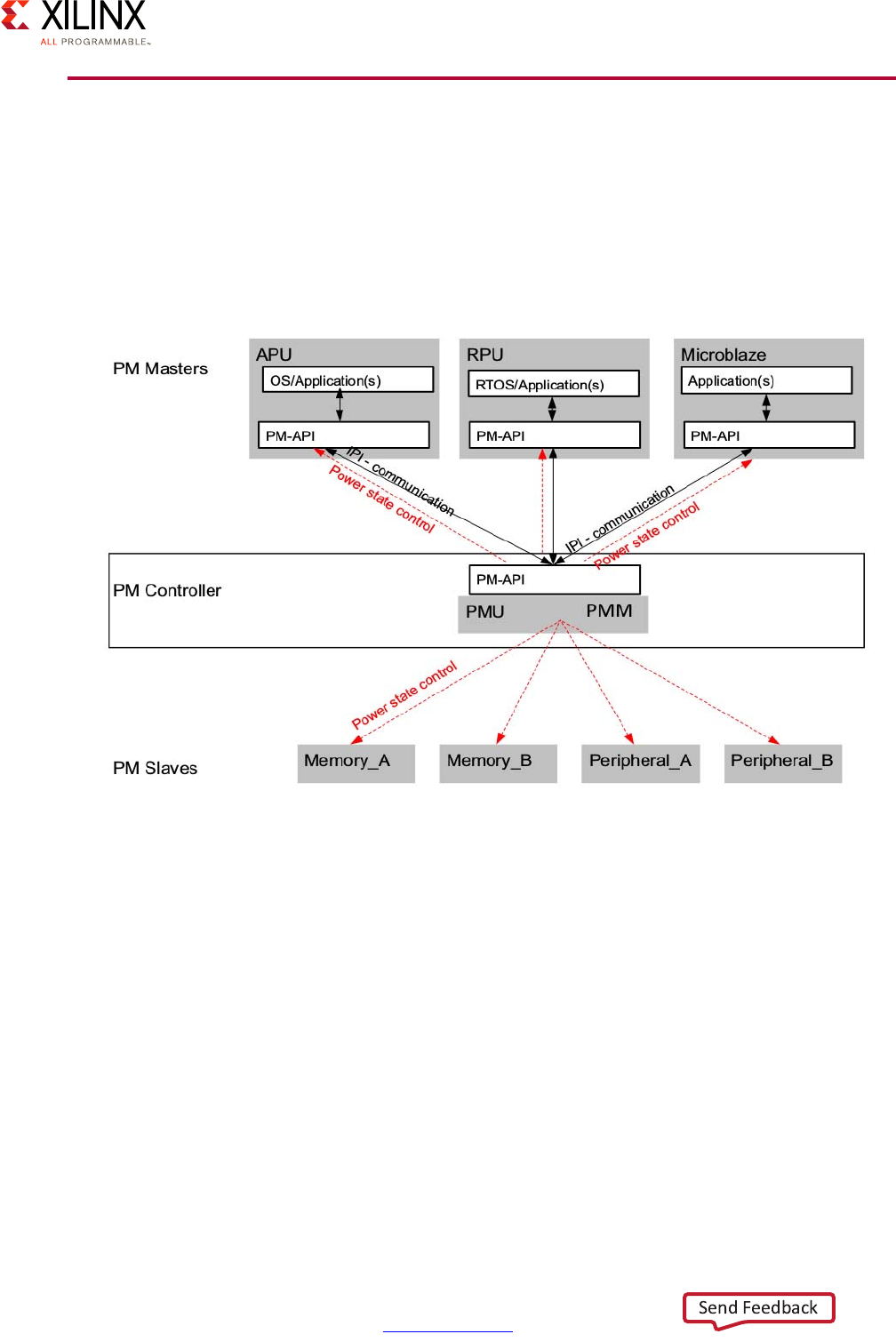

Platform Management Unit . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 93

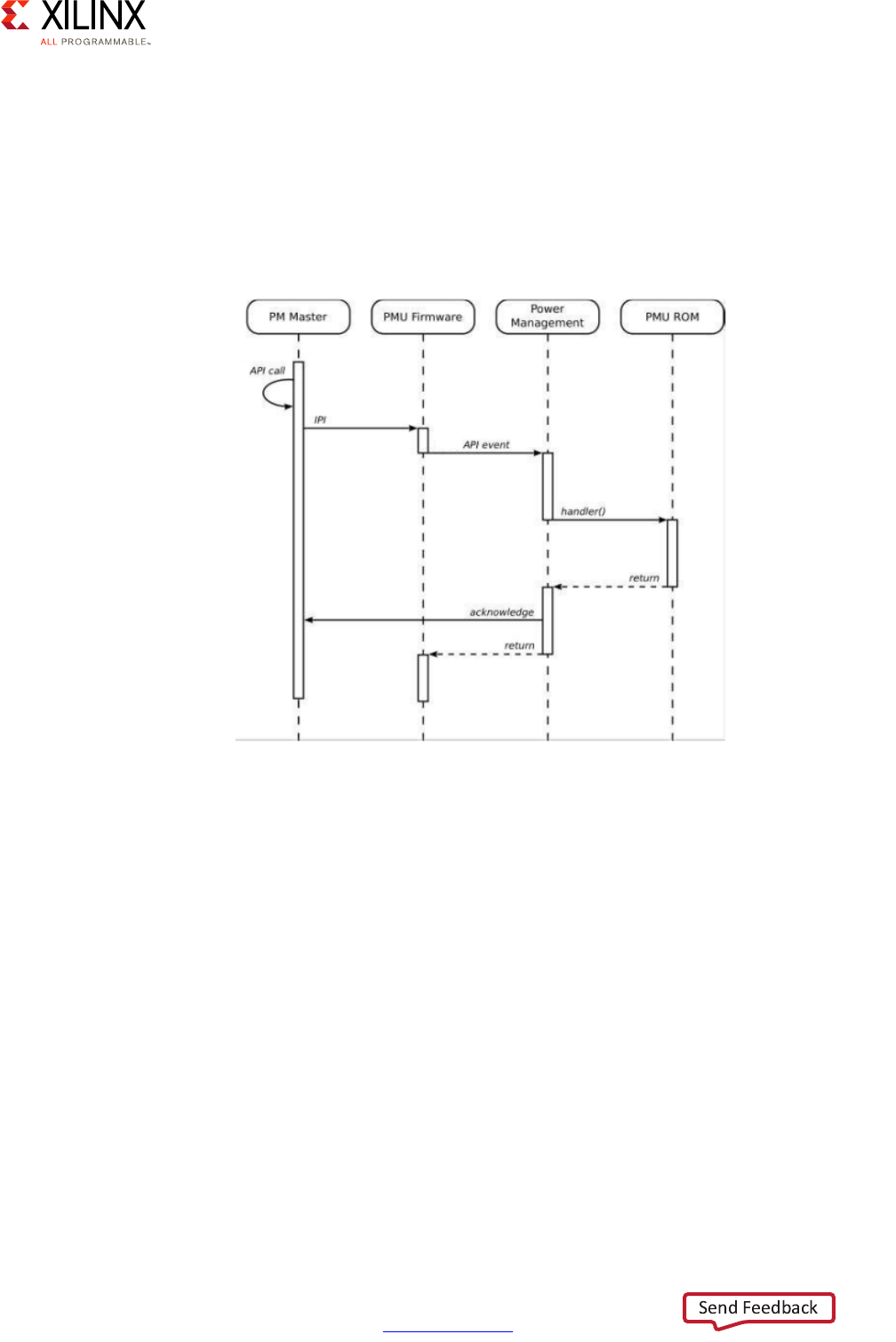

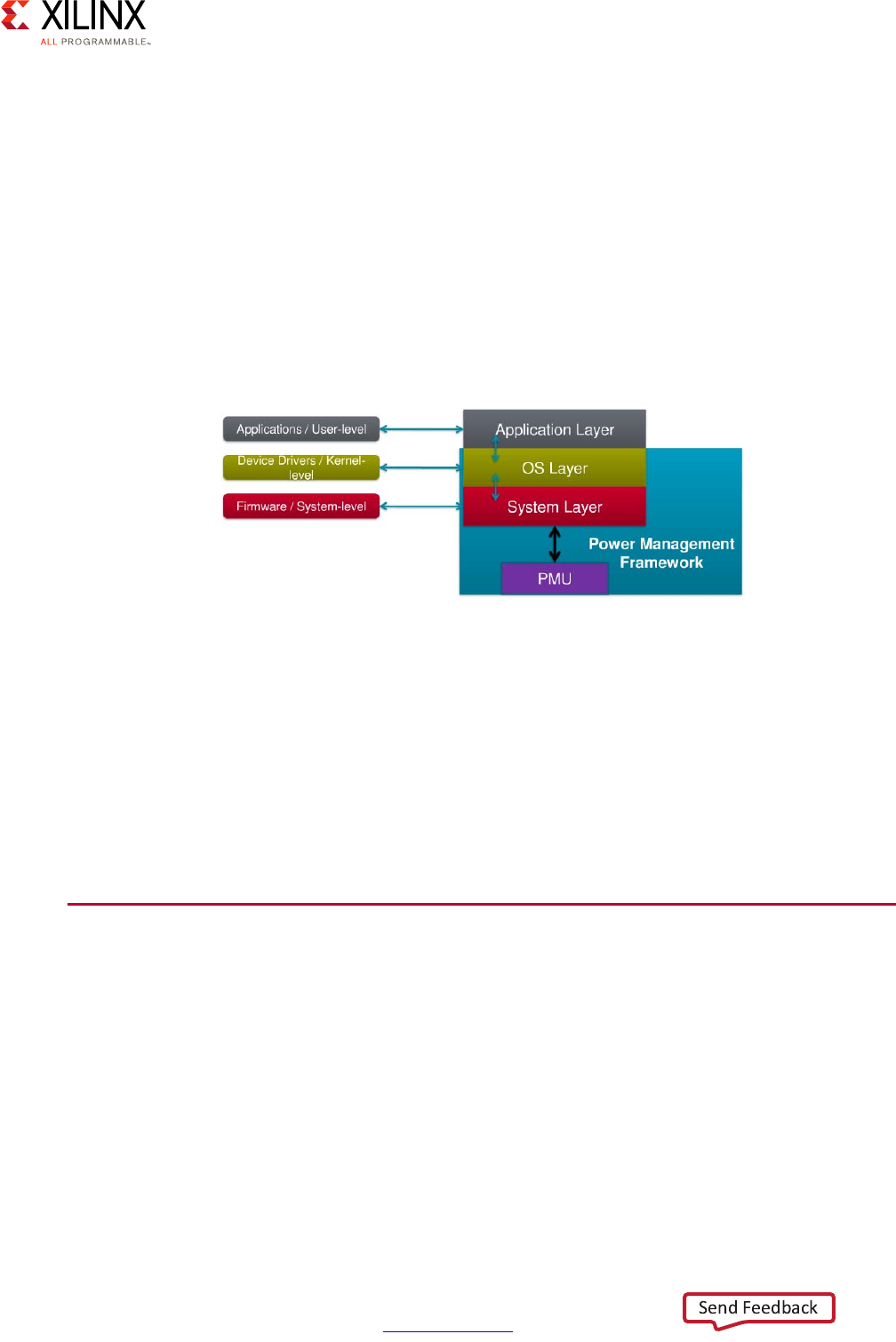

Power Management Software Architecture . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 95

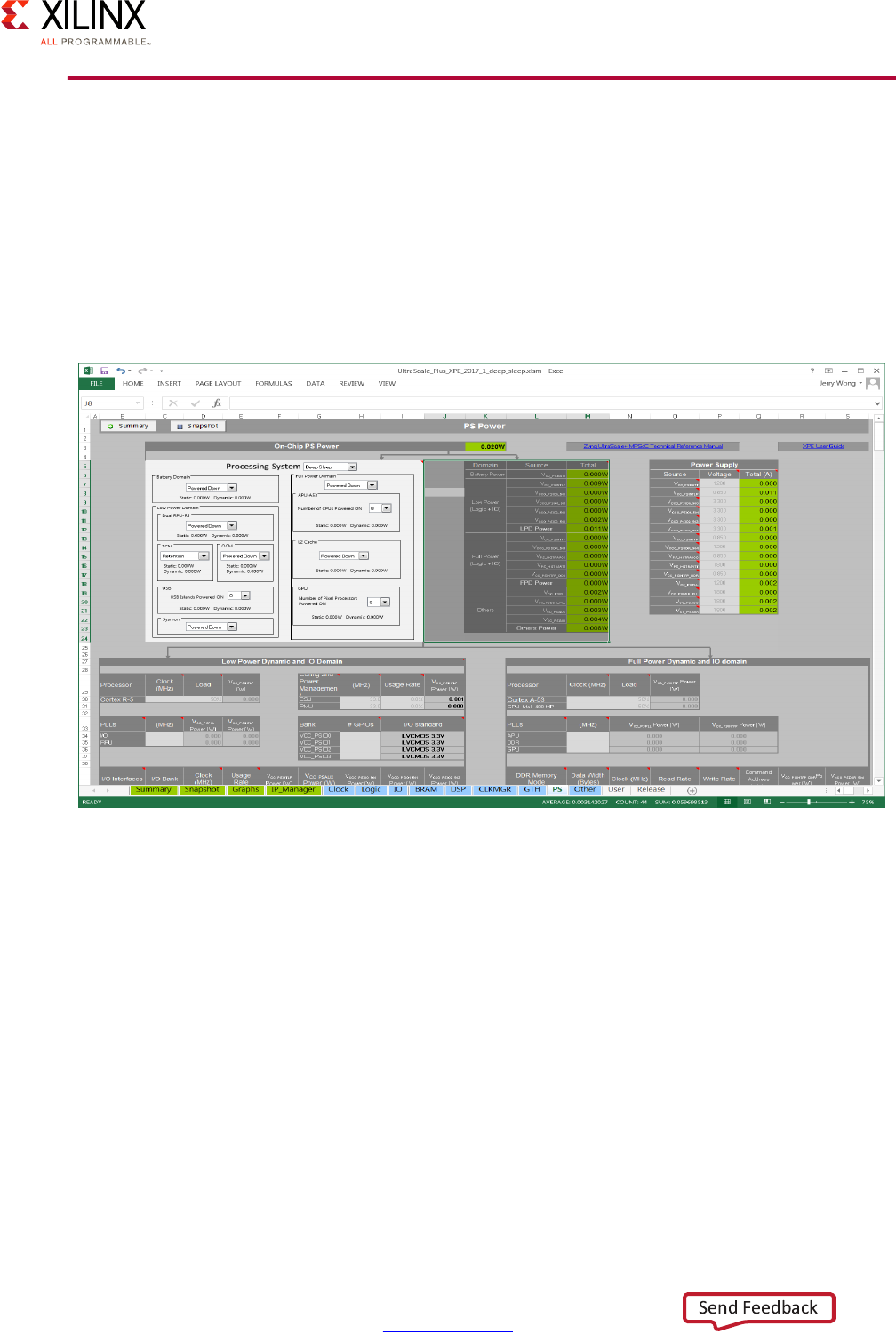

Xilinx Power Estimator . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 96

Chapter 5: Programmable Logic

Defining Your PL Needs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 97

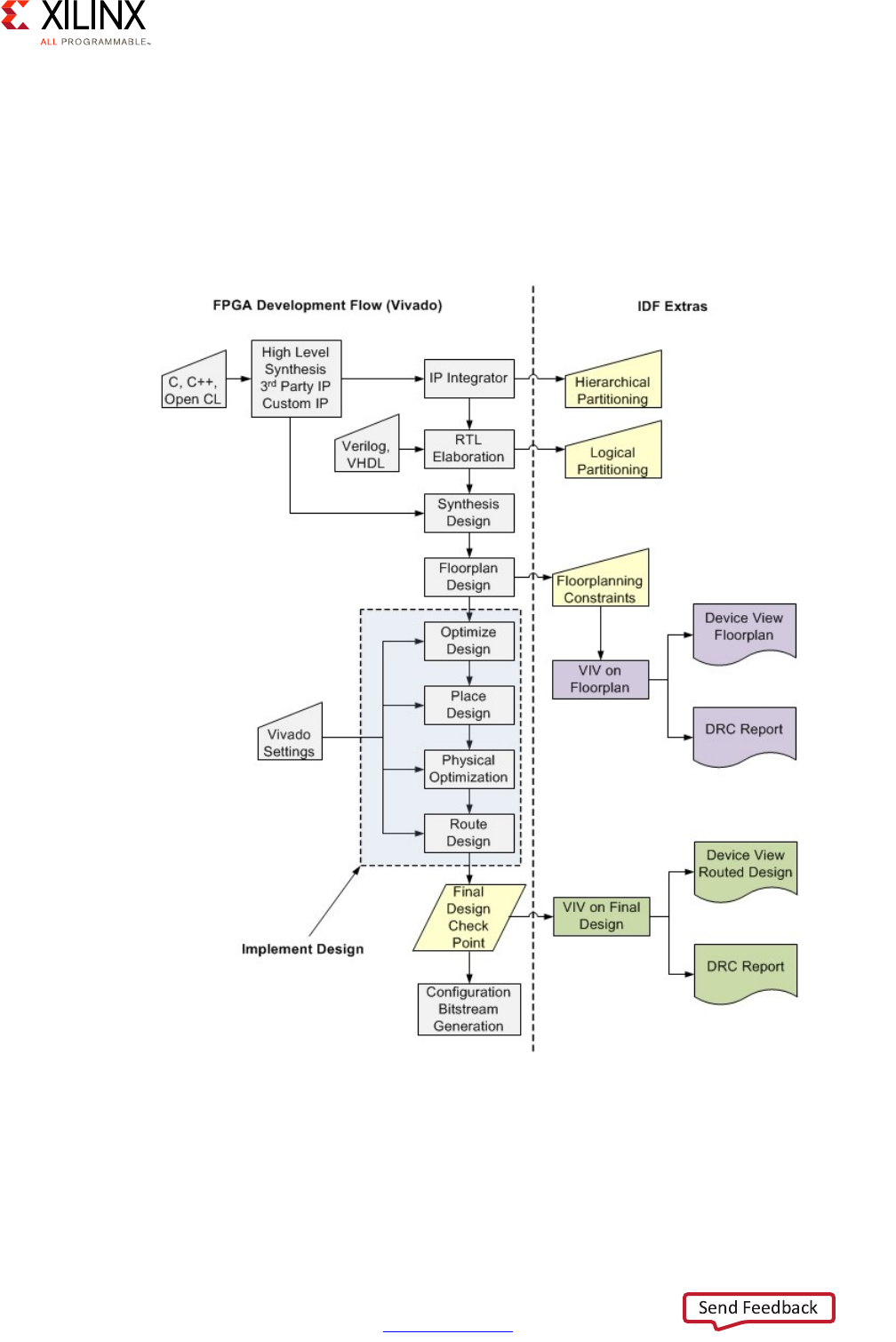

PL Methodology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 98

Logic . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 104

Integrated IP Support . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 108

Configuration and Partial Reconfiguration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110

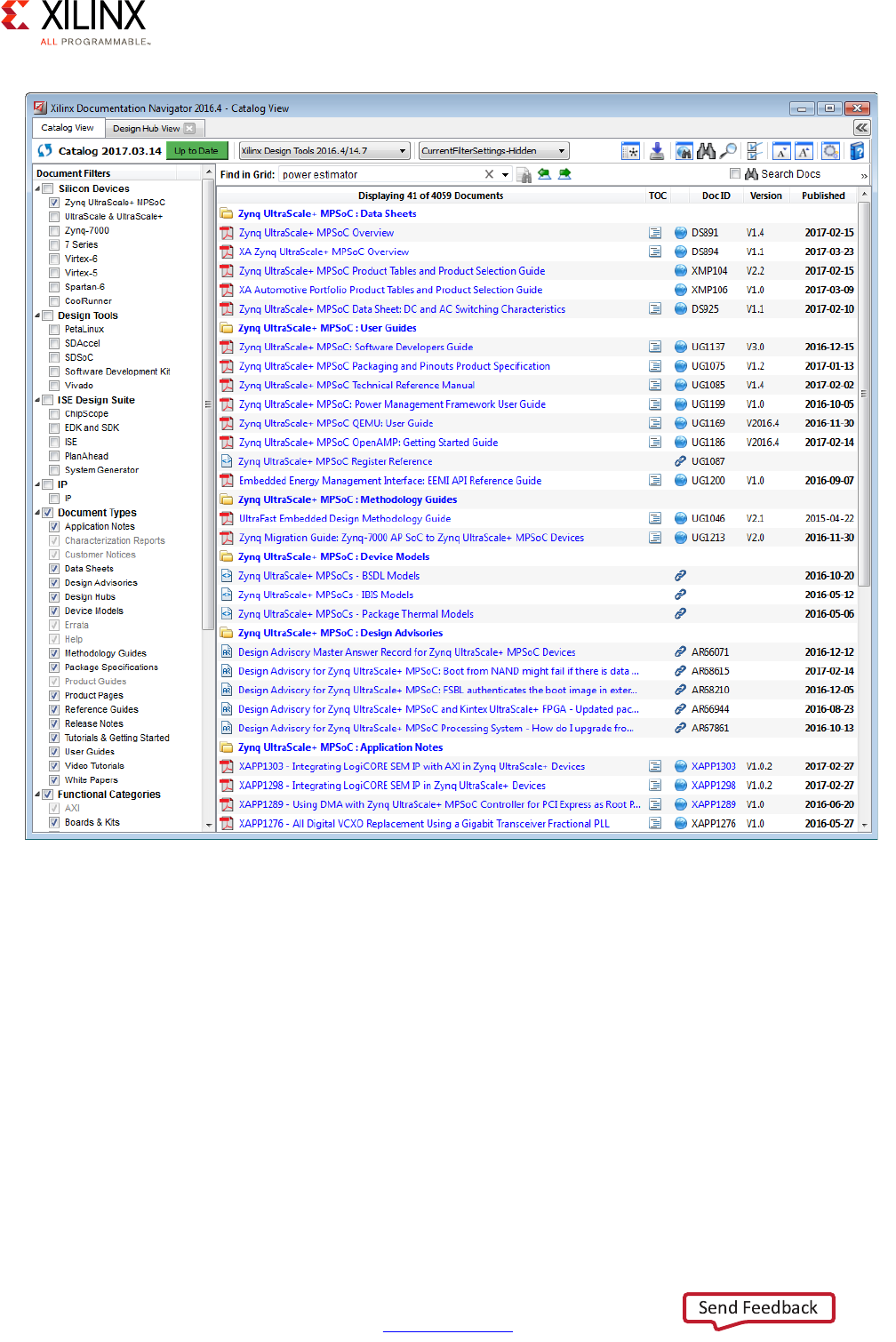

Power Reduction Features . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 111

Chapter 6: Memory

Memory Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 113

Defining Your Memory Needs. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 113

Memory Methodology. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 114

Built-In Memory Blocks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 116

PS DDR Memory and Controller . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 118

Global System Memory Map. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 119

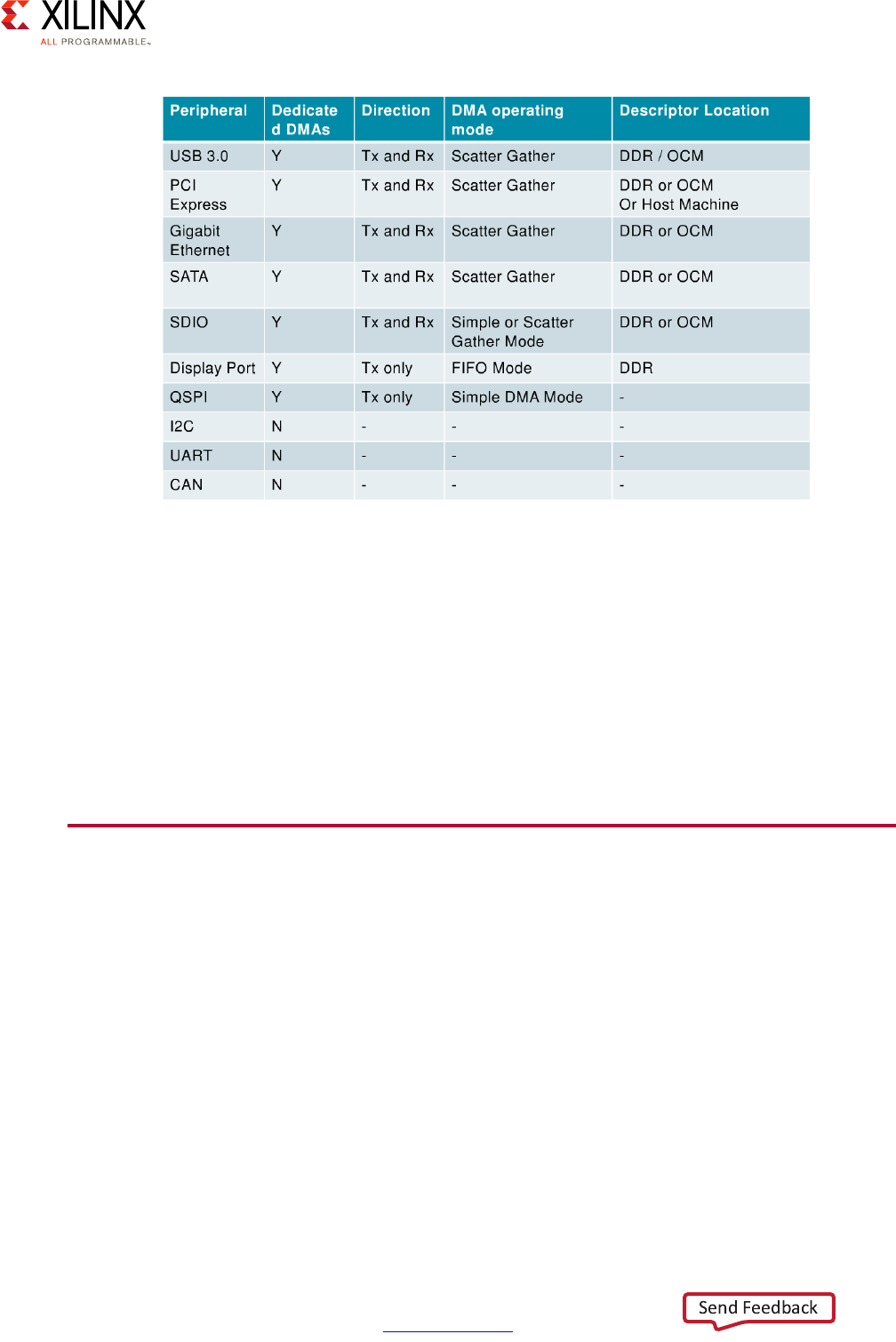

PS DMA Controllers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 121

External Memory with the PL . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 125

Chapter 7: Resource Isolation and Partitioning

Defining Your Resource Isolation and Partitioning Needs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 127

Resource Isolation and Partitioning Methodology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 128

ARM TrustZone . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 135

System Memory Management Unit . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 139

Xilinx Memory Protection Unit . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 142

Xilinx Peripheral Protection Unit . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 145

Xen Hypervisor . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 149

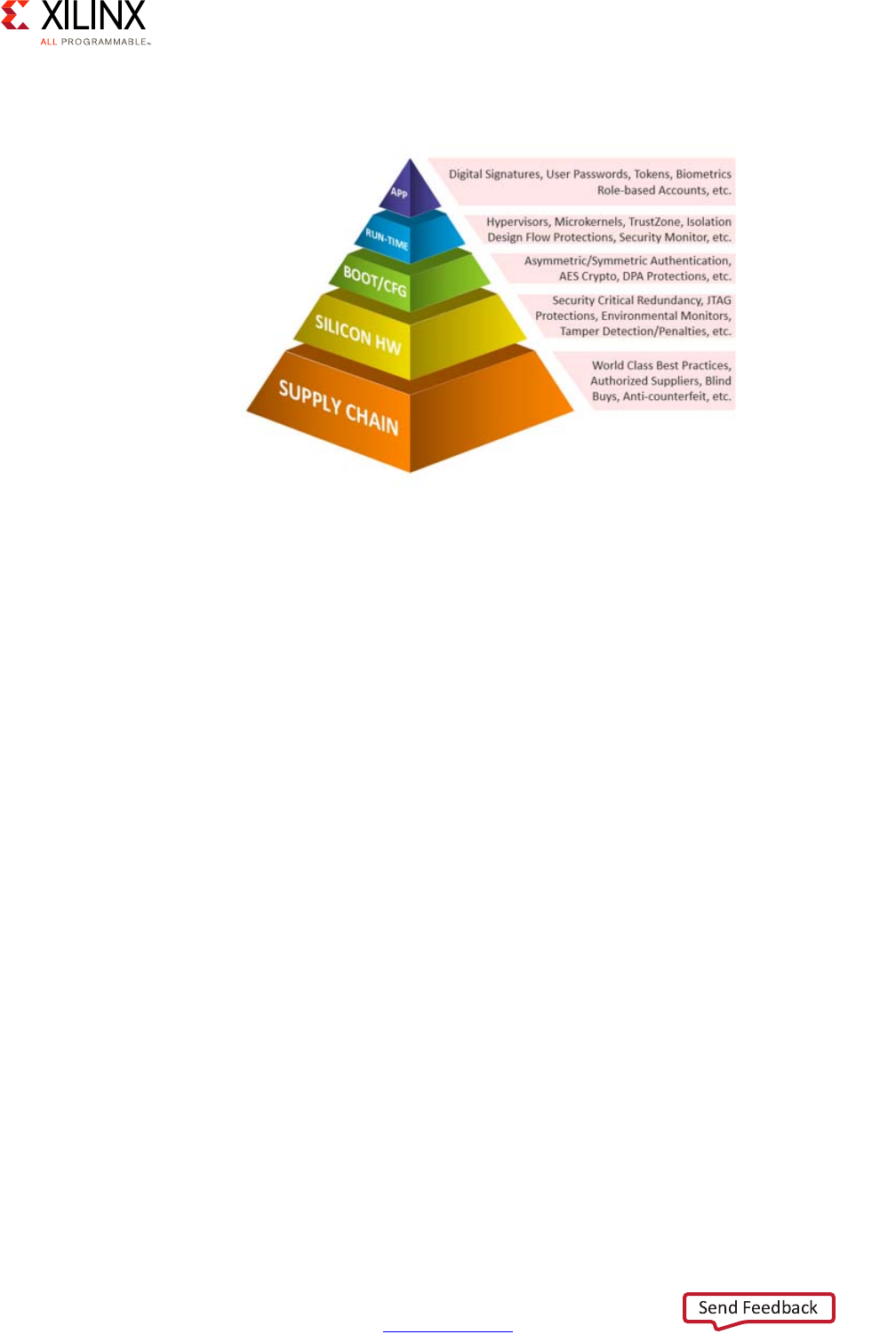

Chapter 8: Security

Defining Your Security Needs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 150

Security Methodology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 151

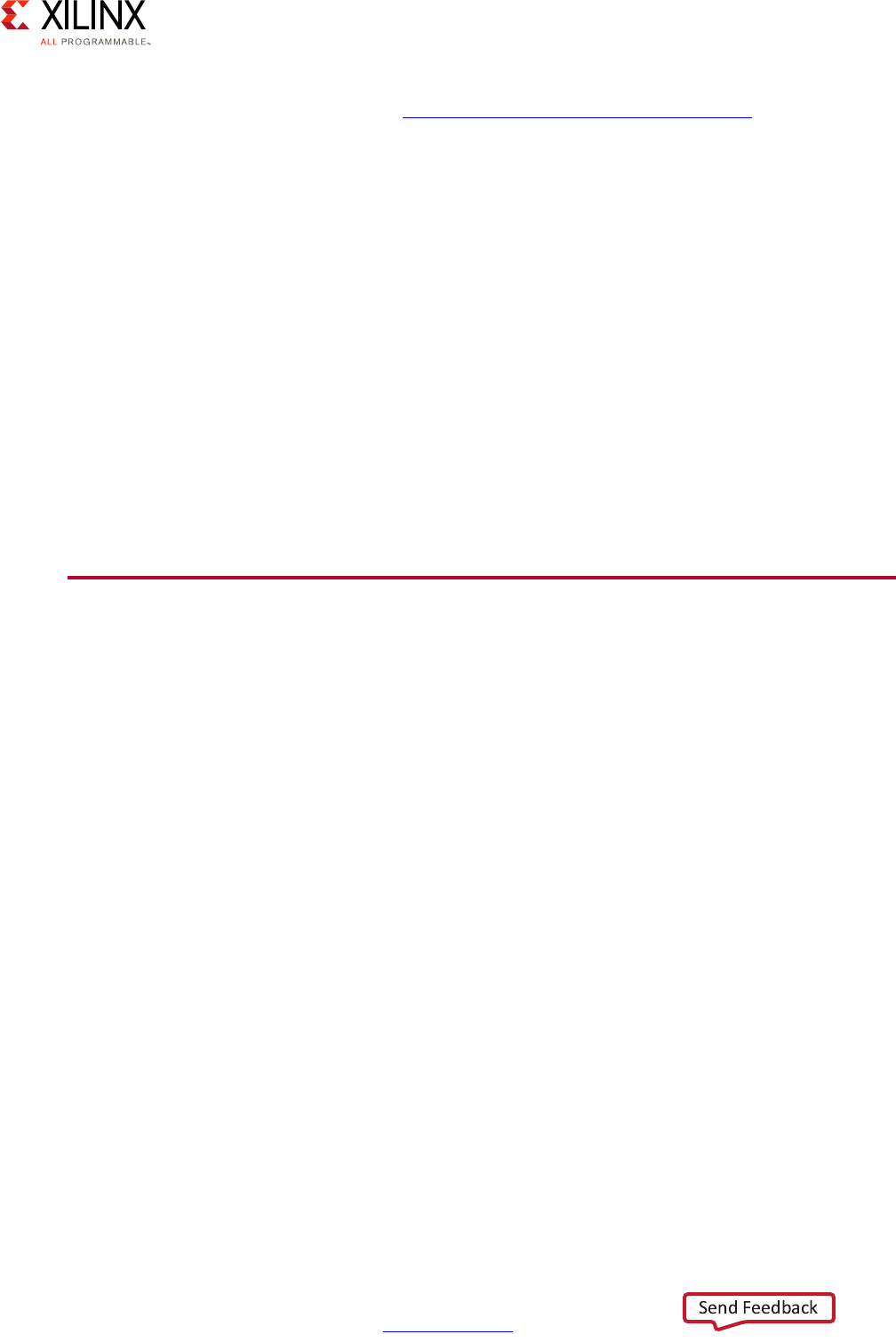

Security Features Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 153

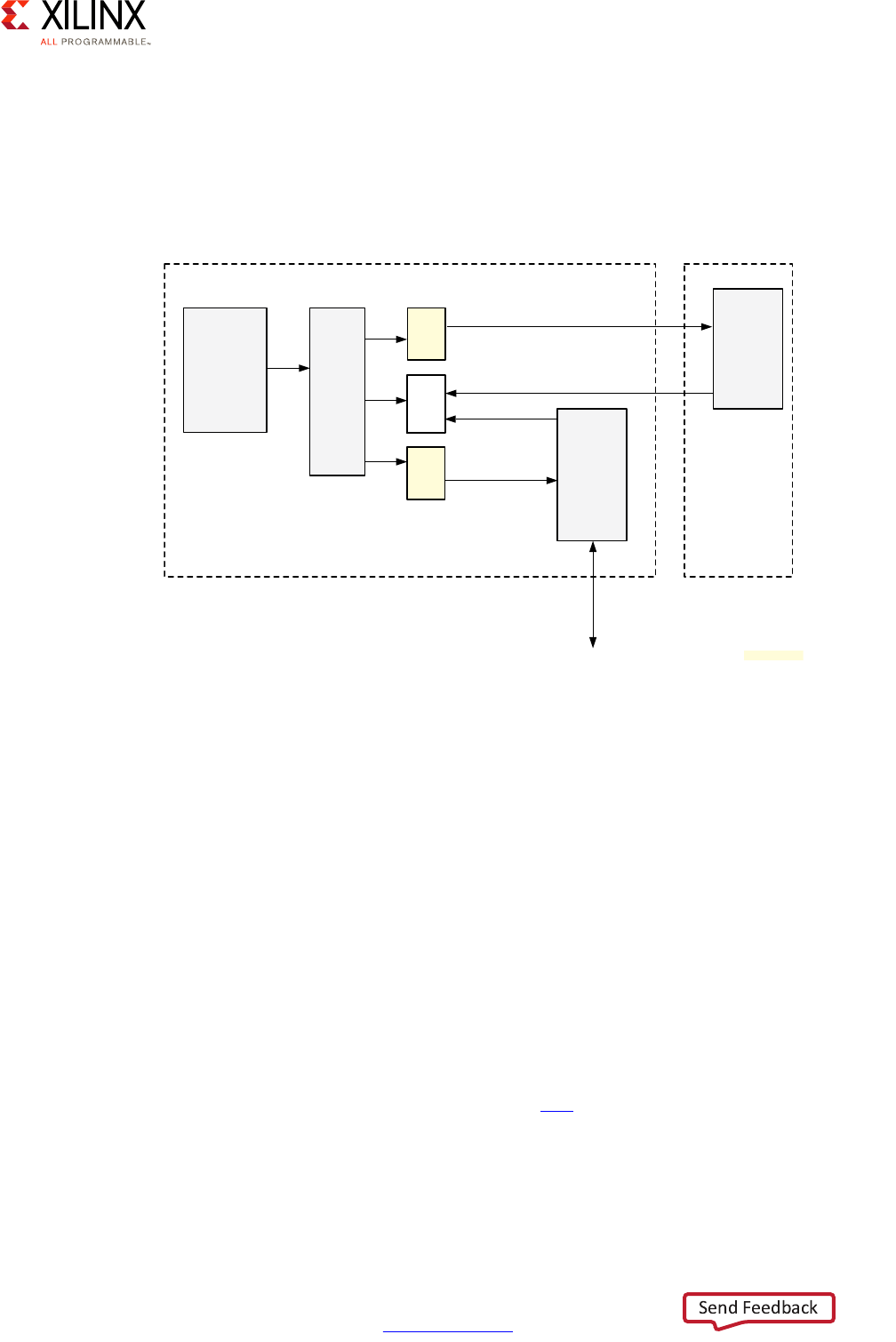

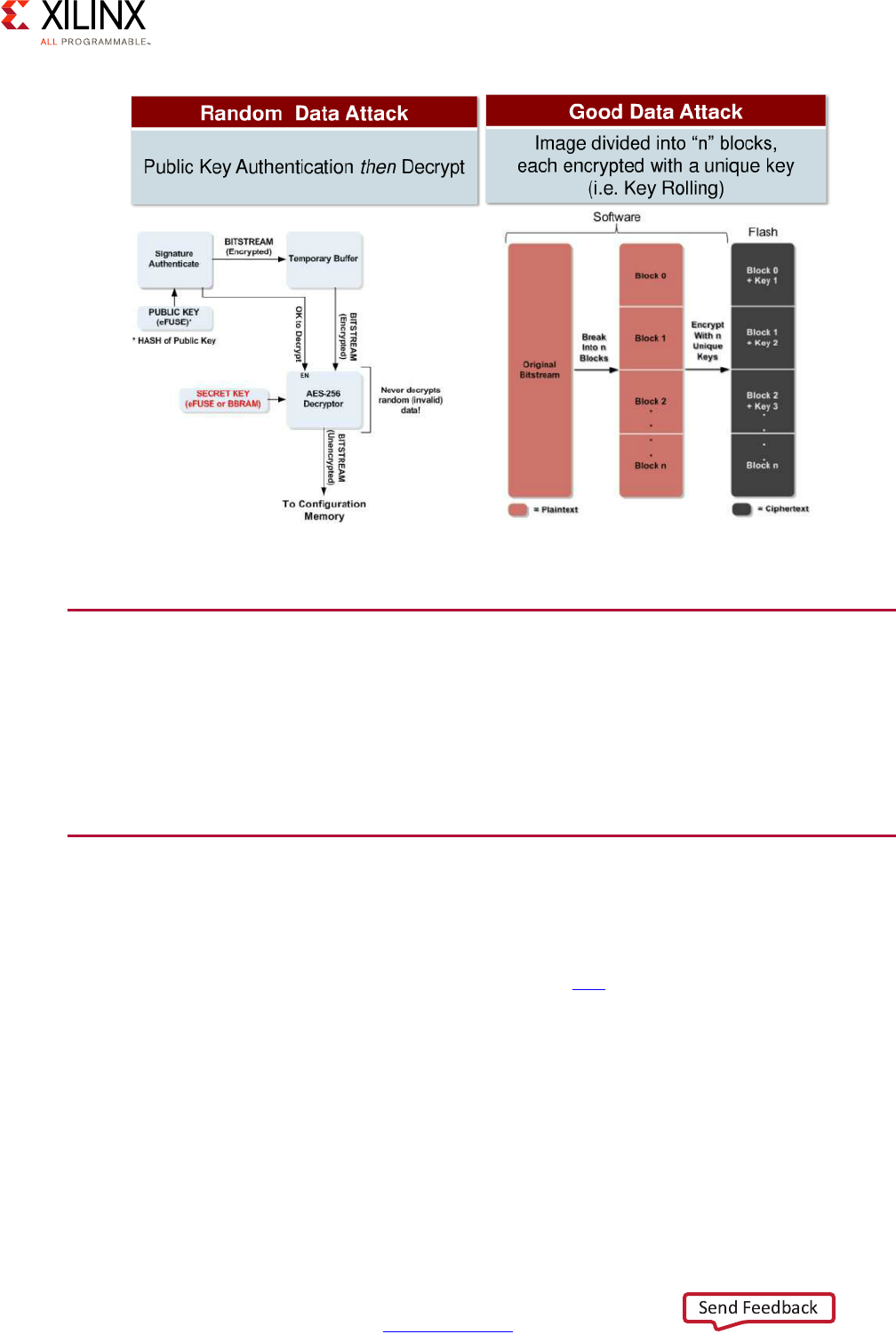

Configuration Security and Secure Boot. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 155

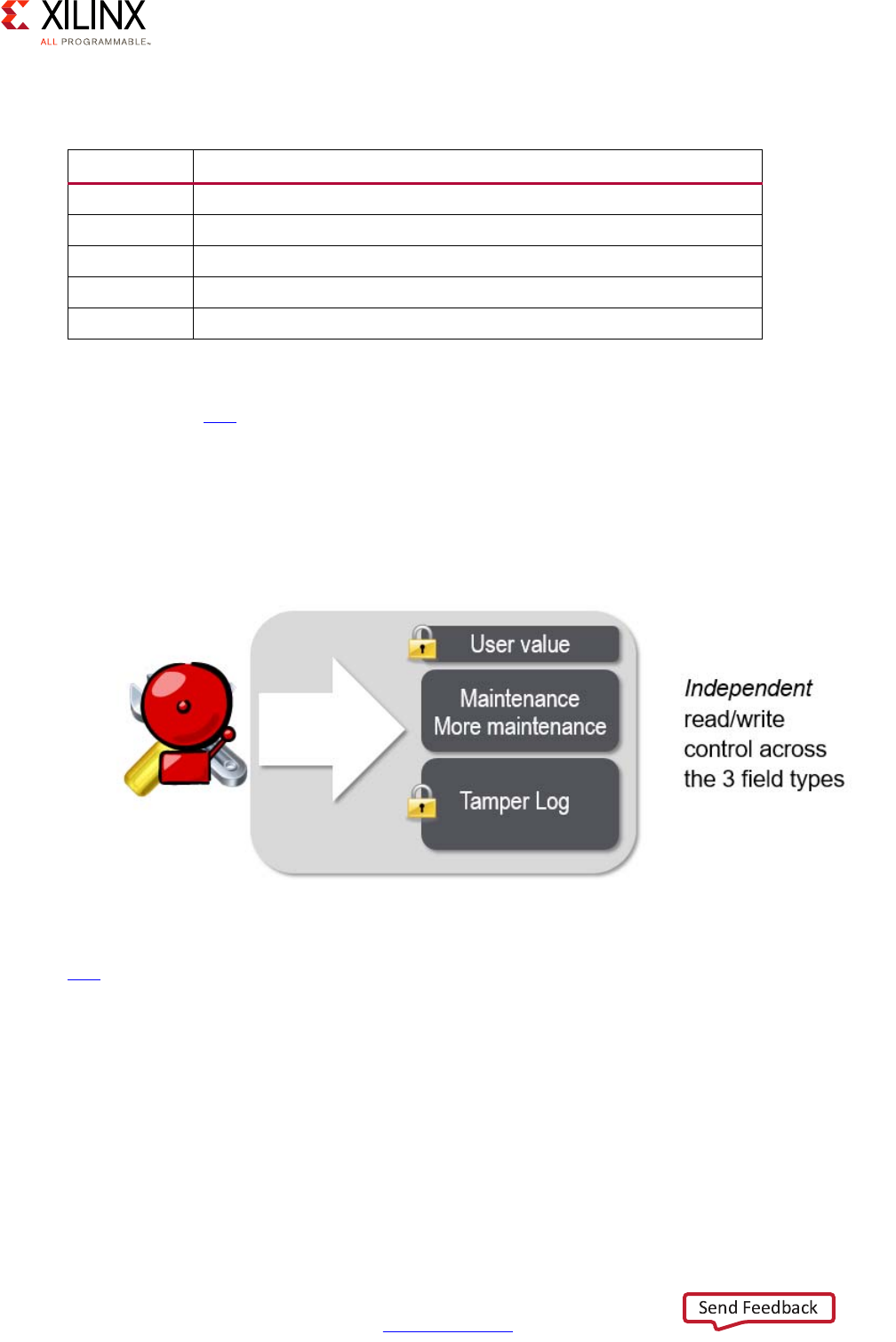

Device and Data Security. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 159

Protection Against DPA Attacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 162

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 5

UG1228 (v1.0) March 31, 2017 www.xilinx.com

CSU Hardware Accelerators . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 163

Functional Safety . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 163

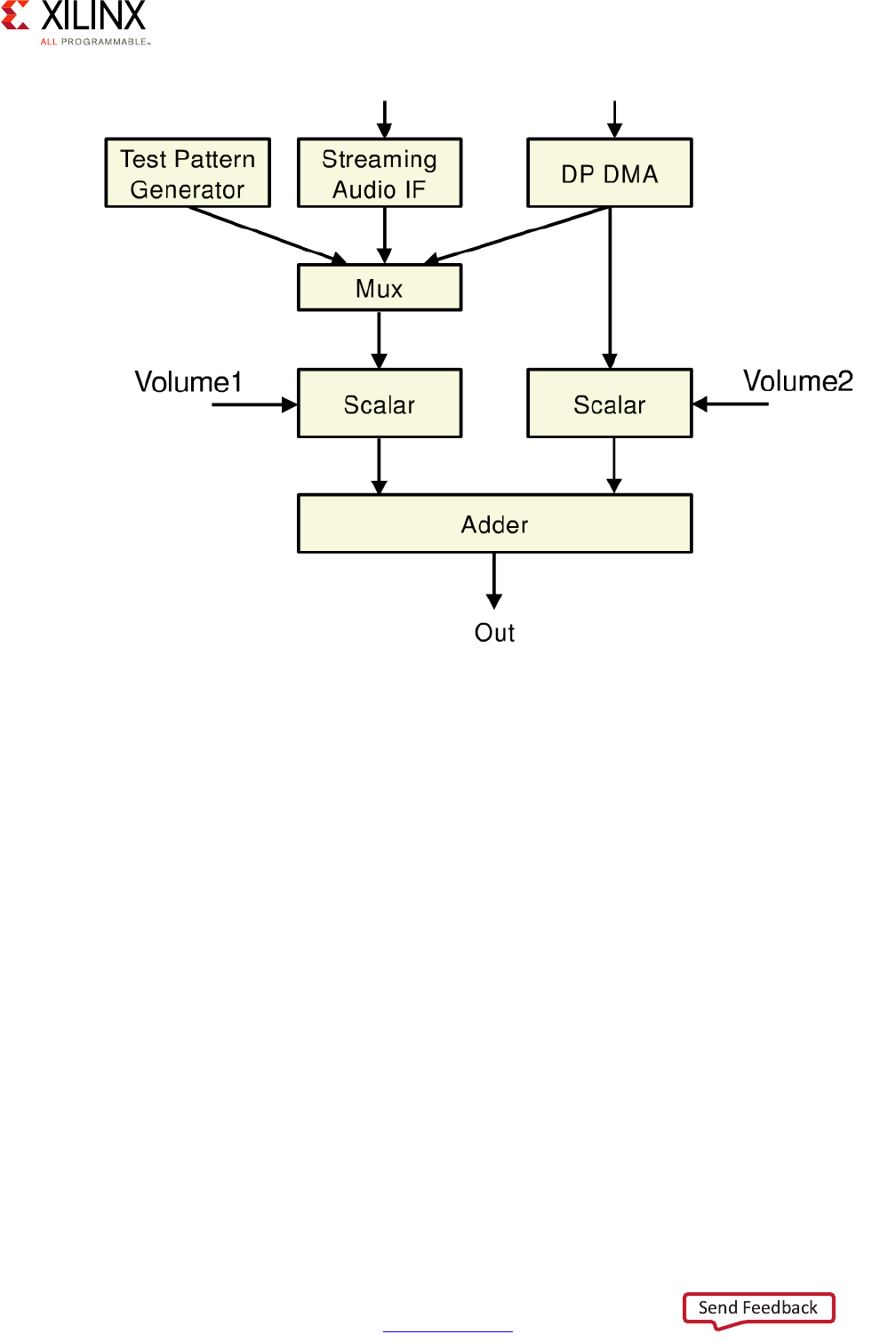

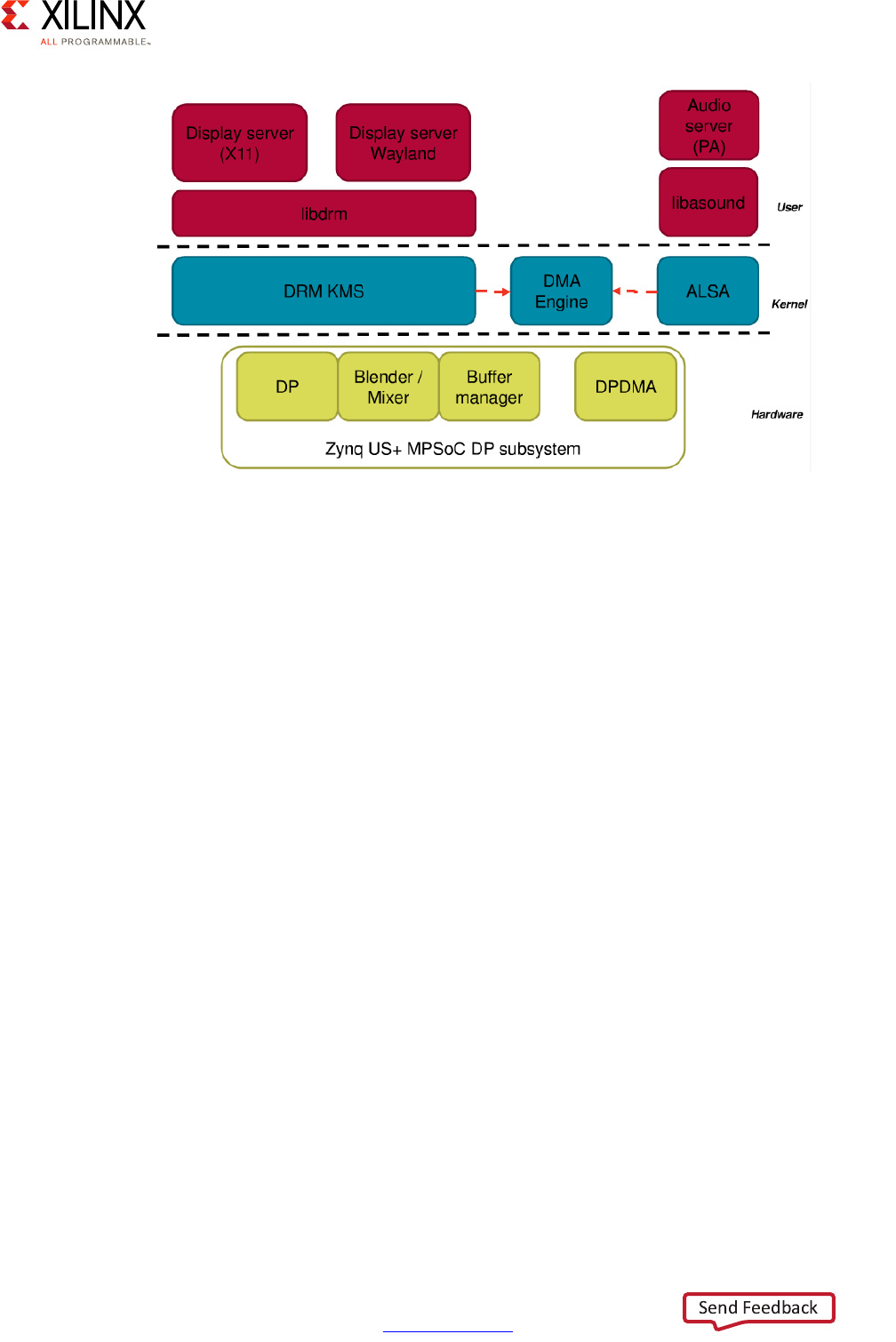

Chapter 9: Multimedia

Defining Your Multimedia Needs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 165

Multimedia Methodology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 166

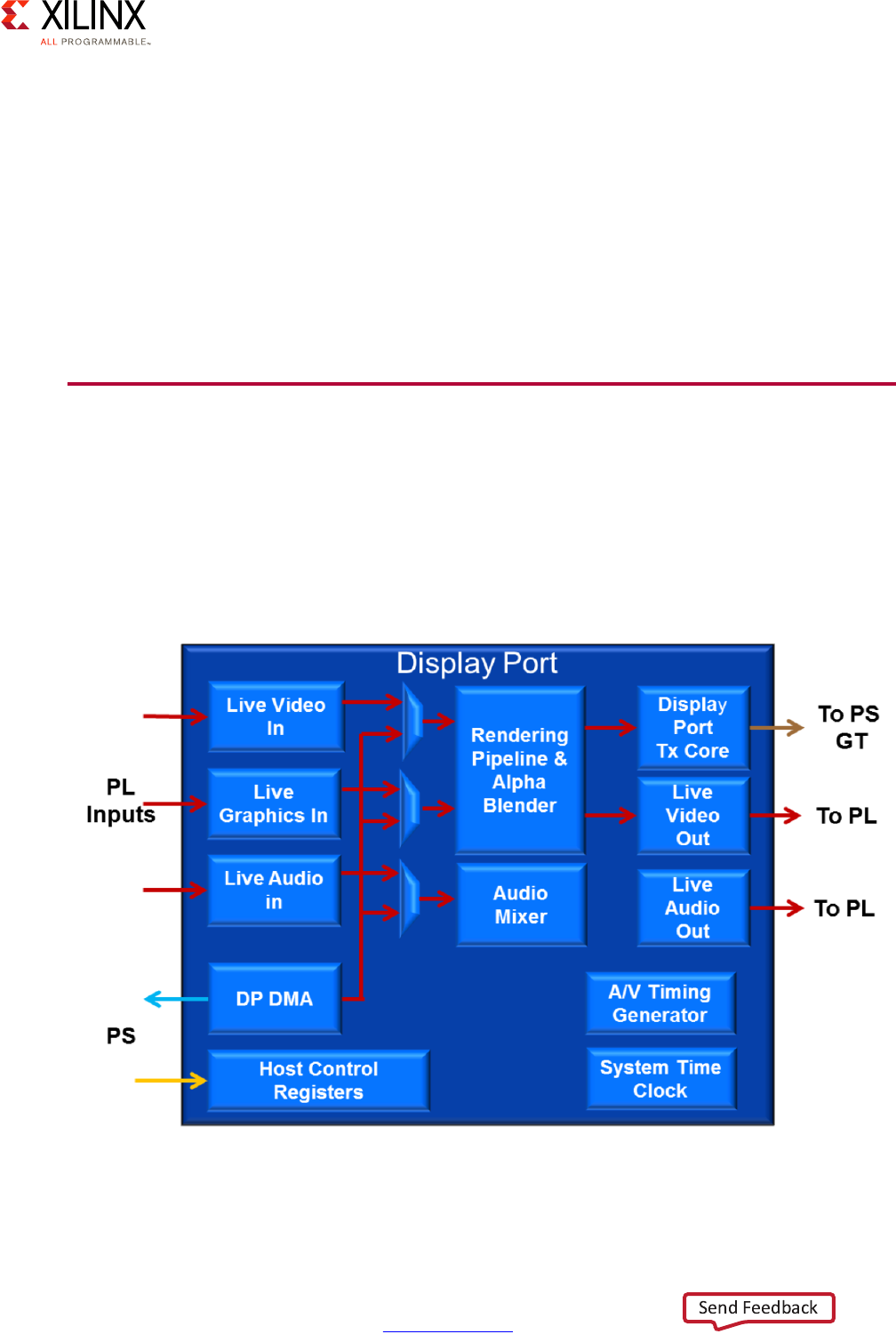

DisplayPort . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 167

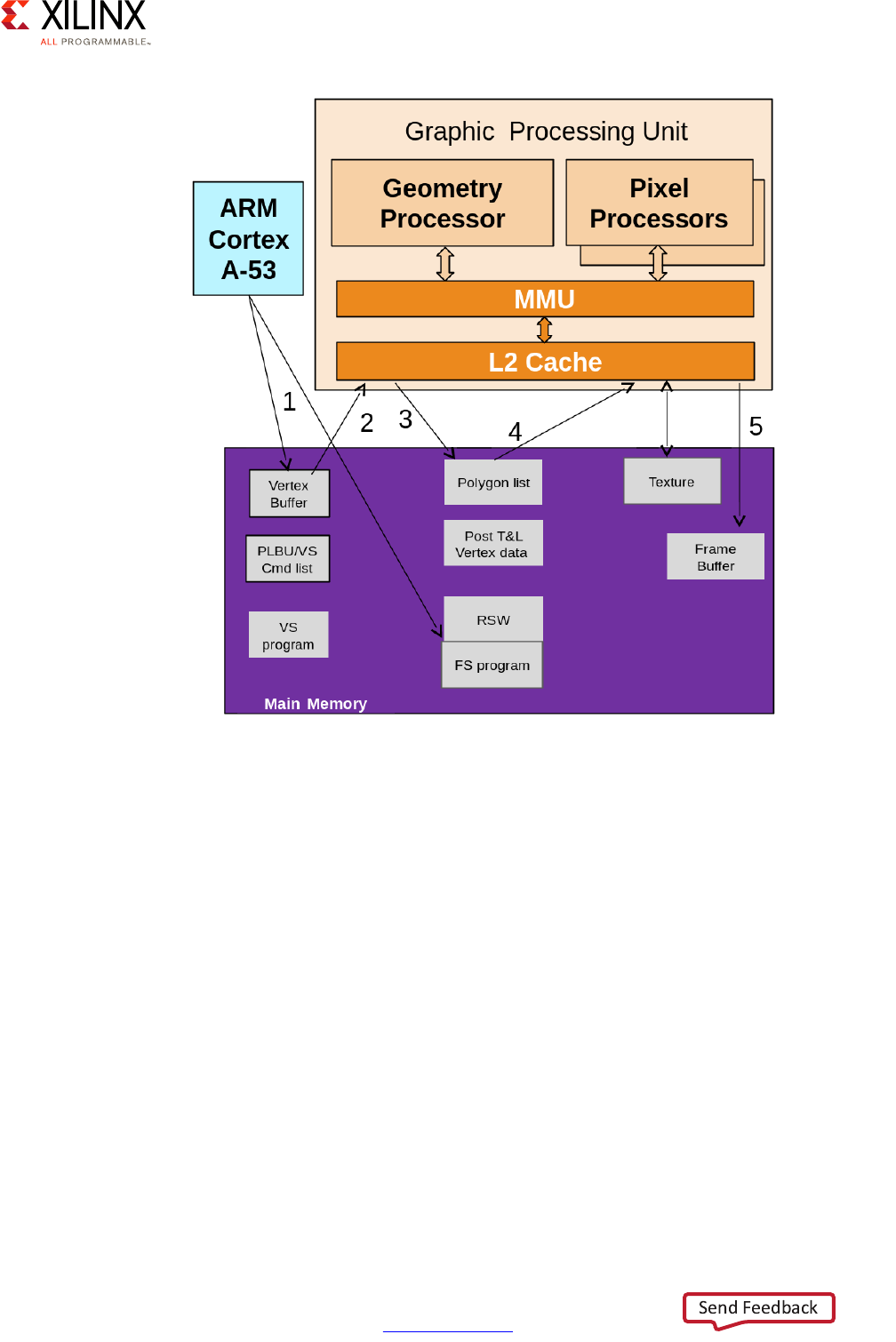

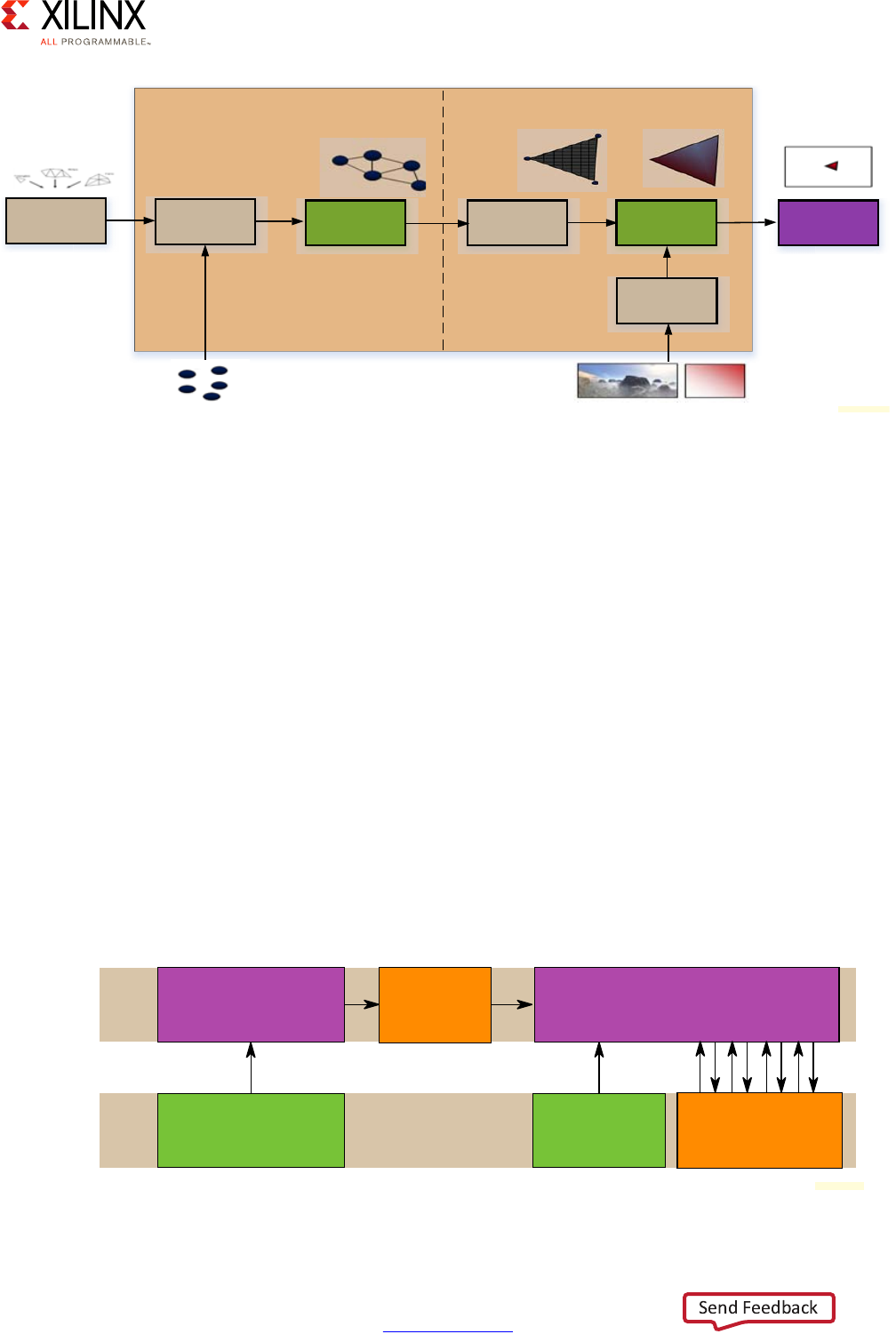

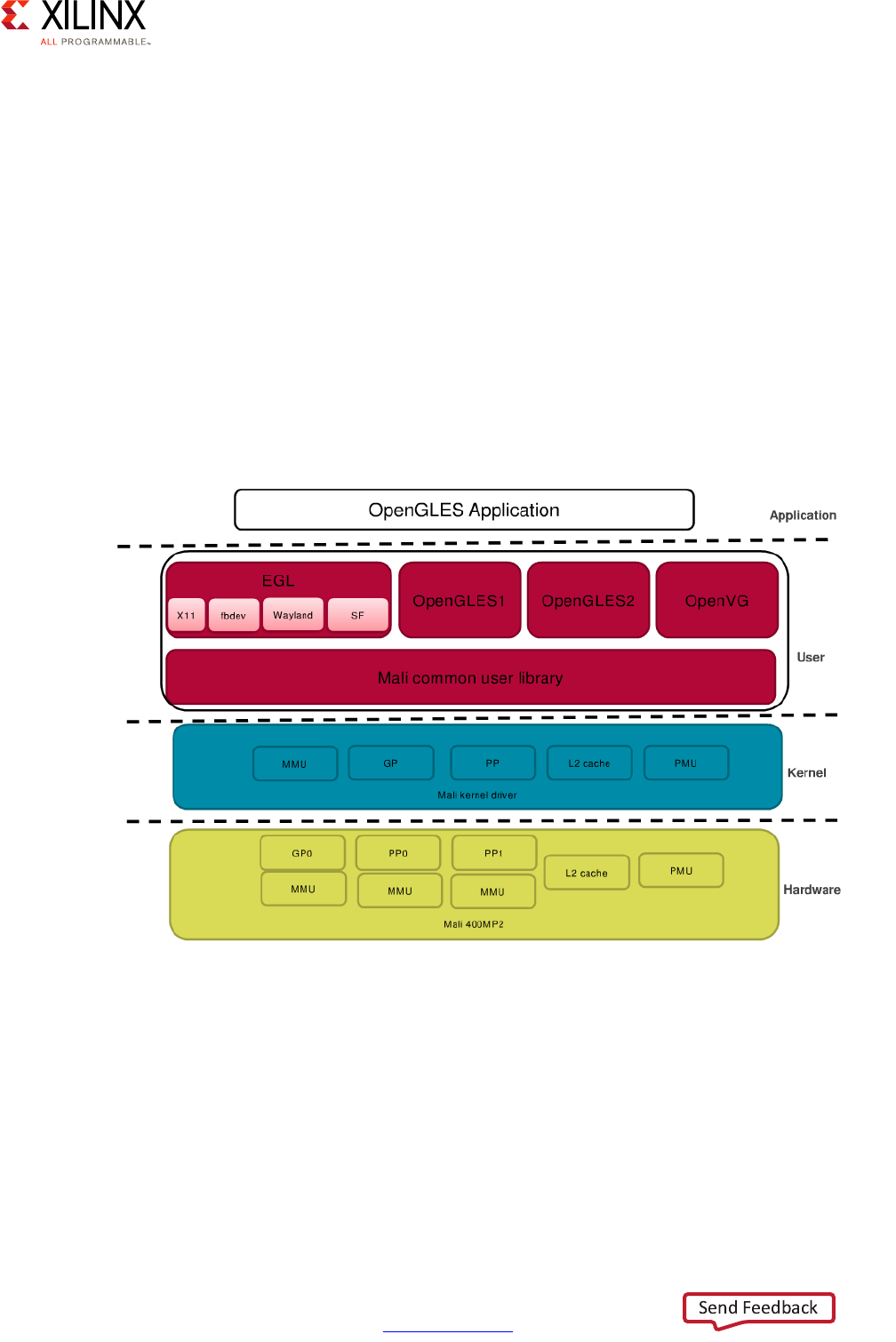

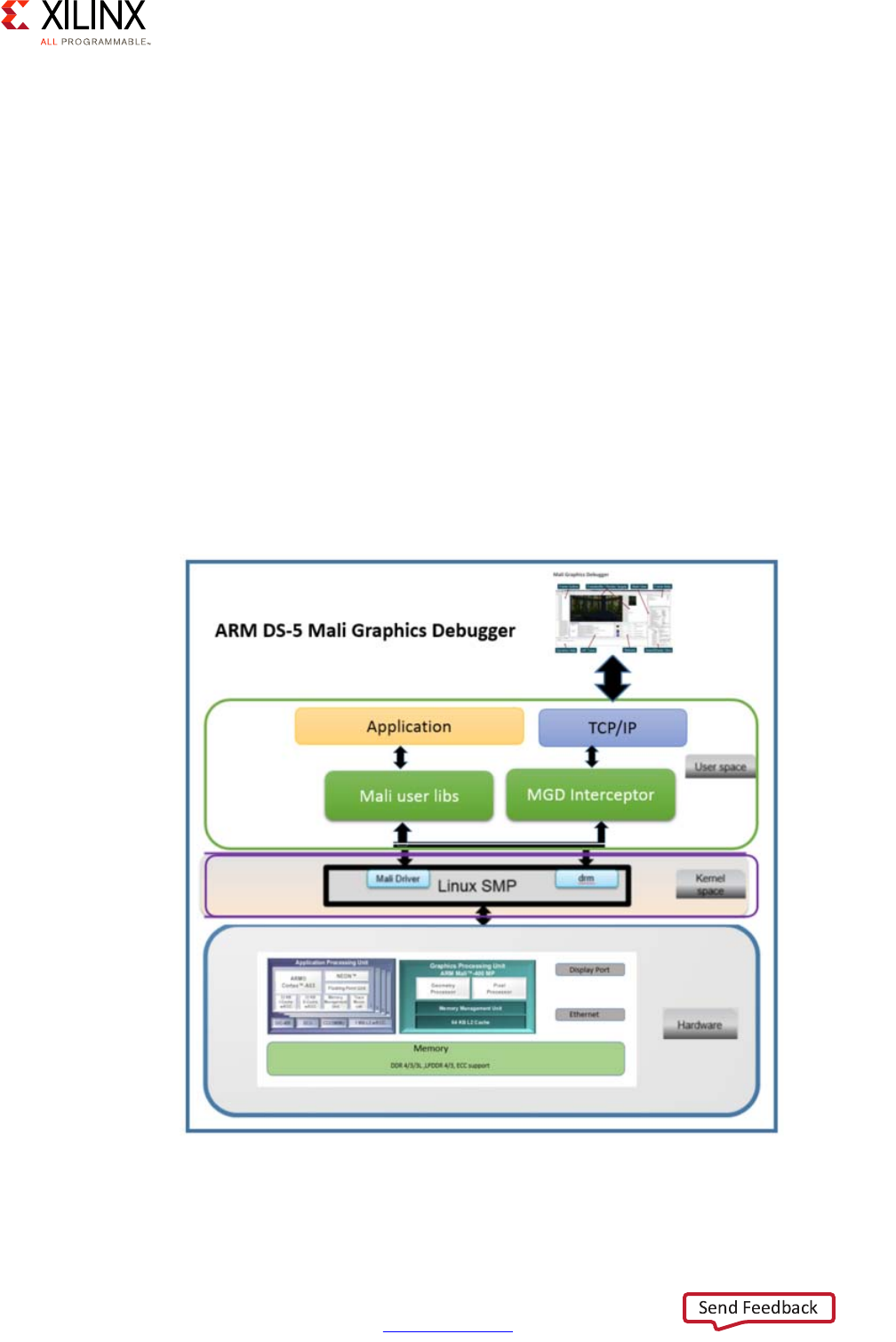

GPU . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 173

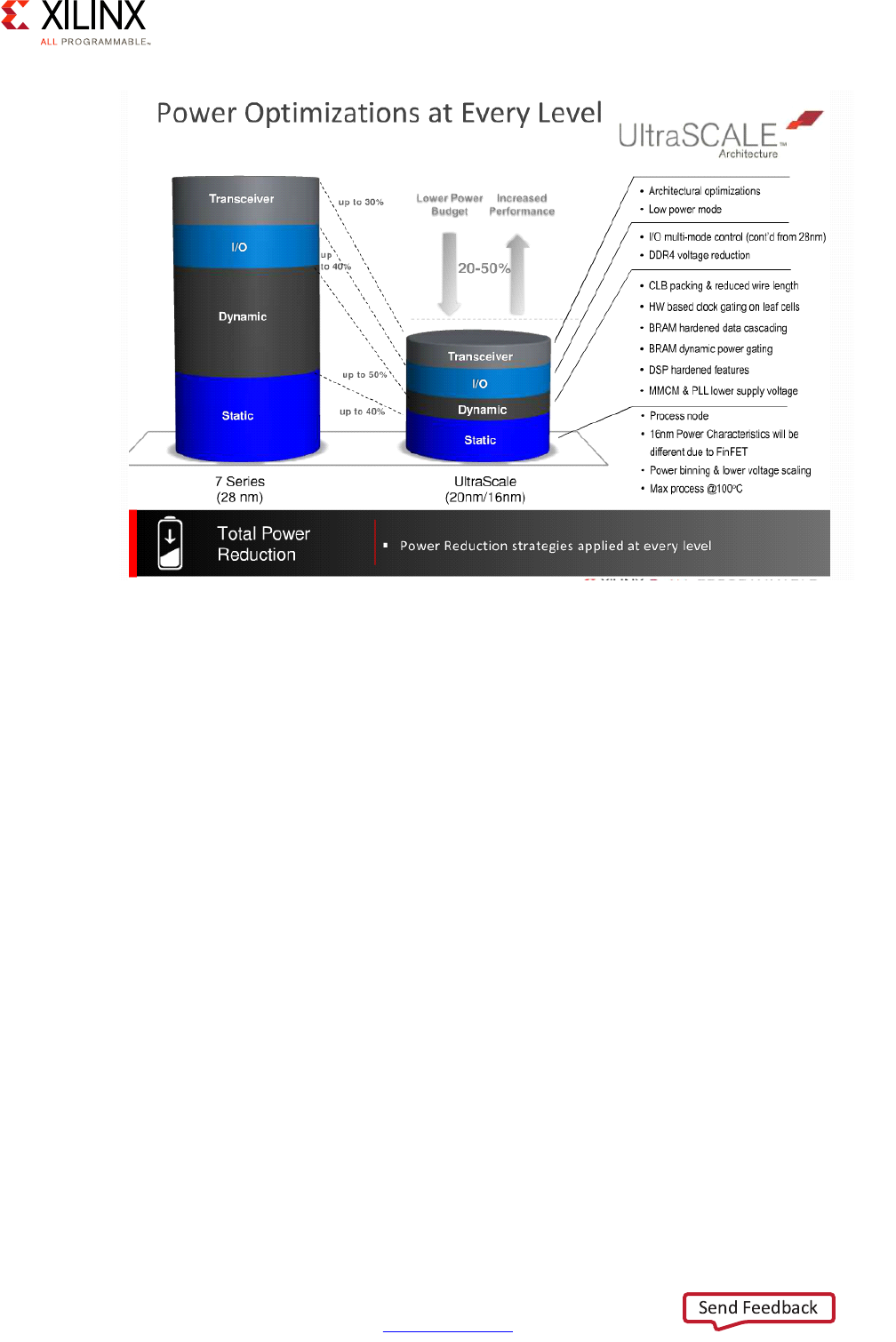

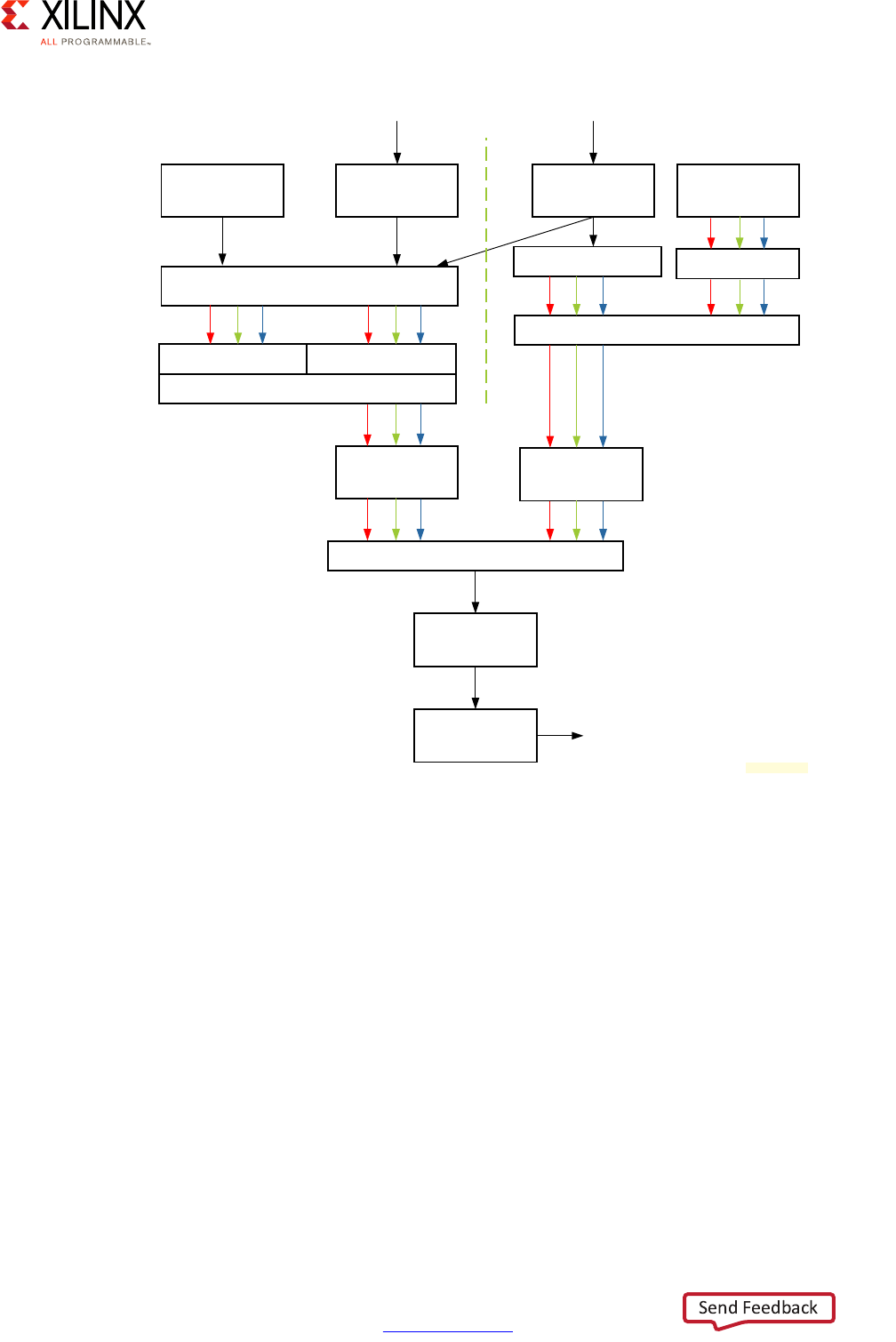

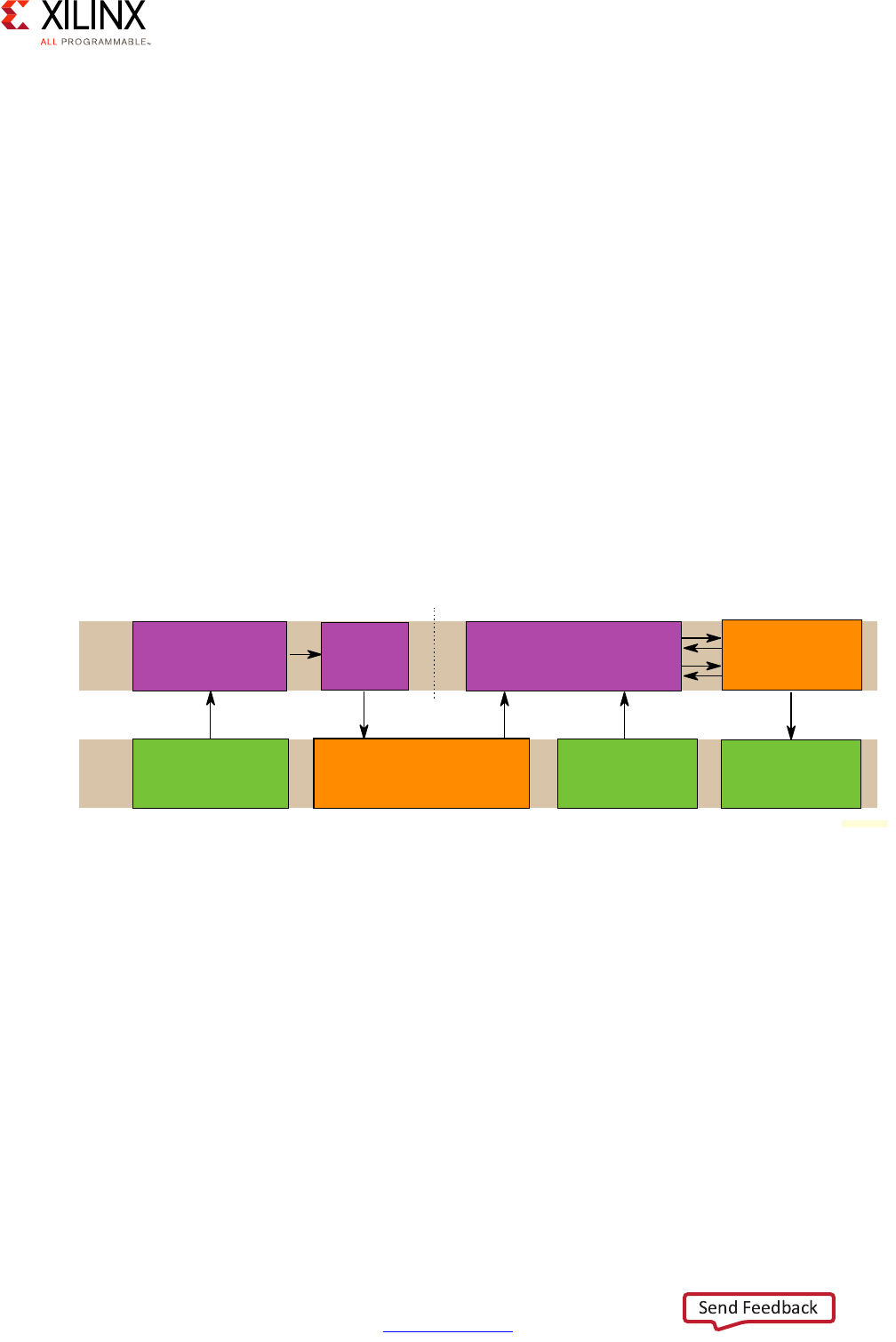

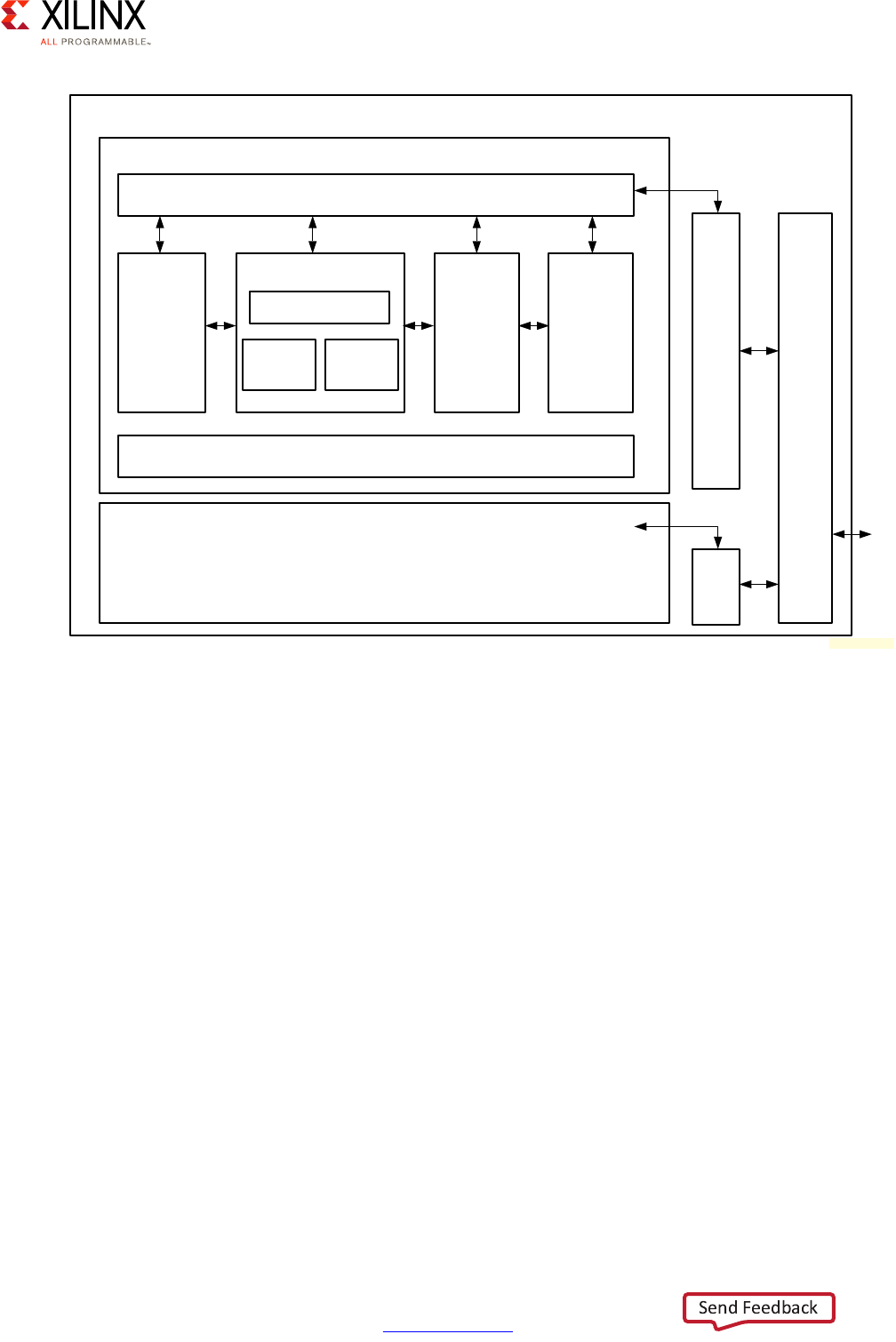

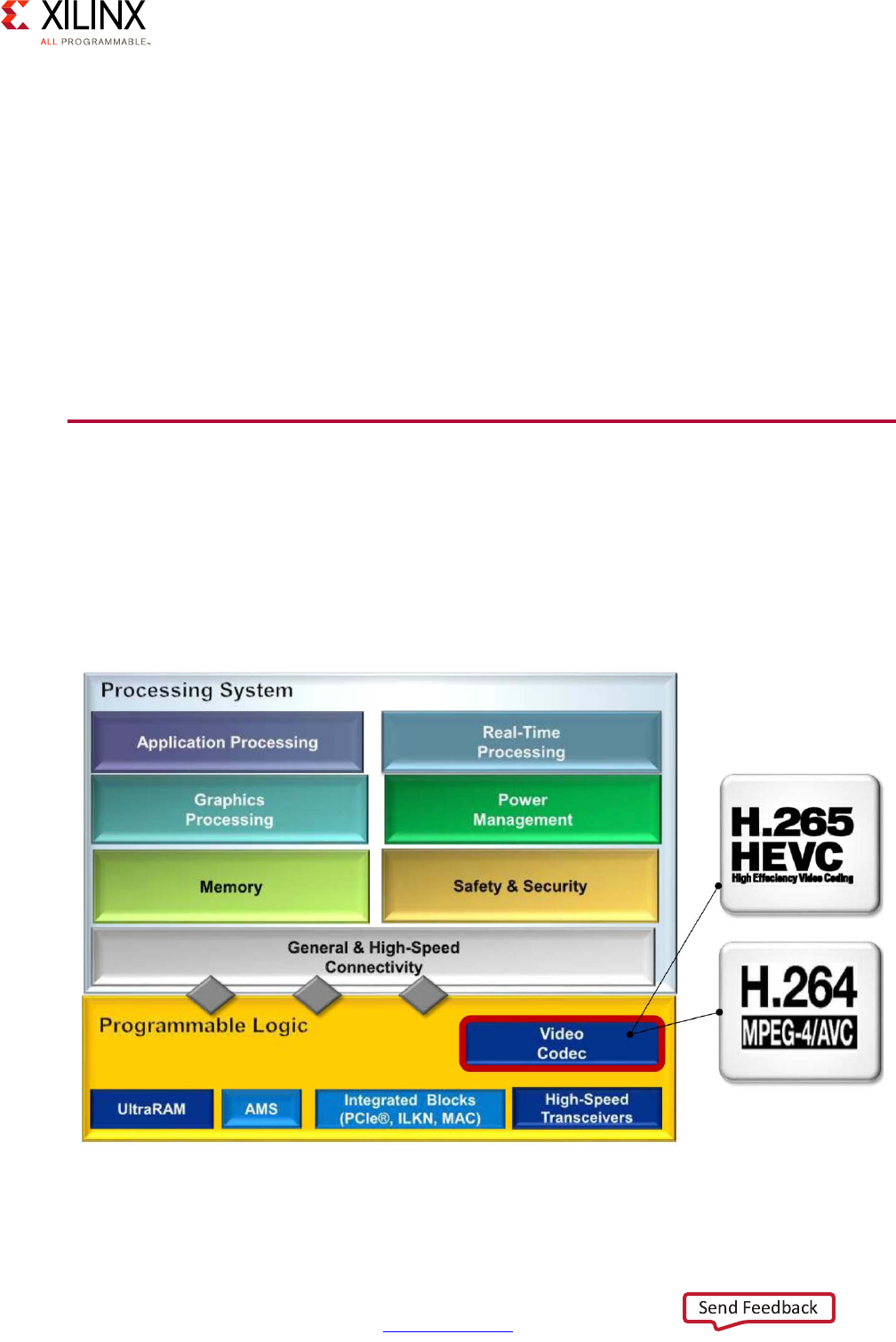

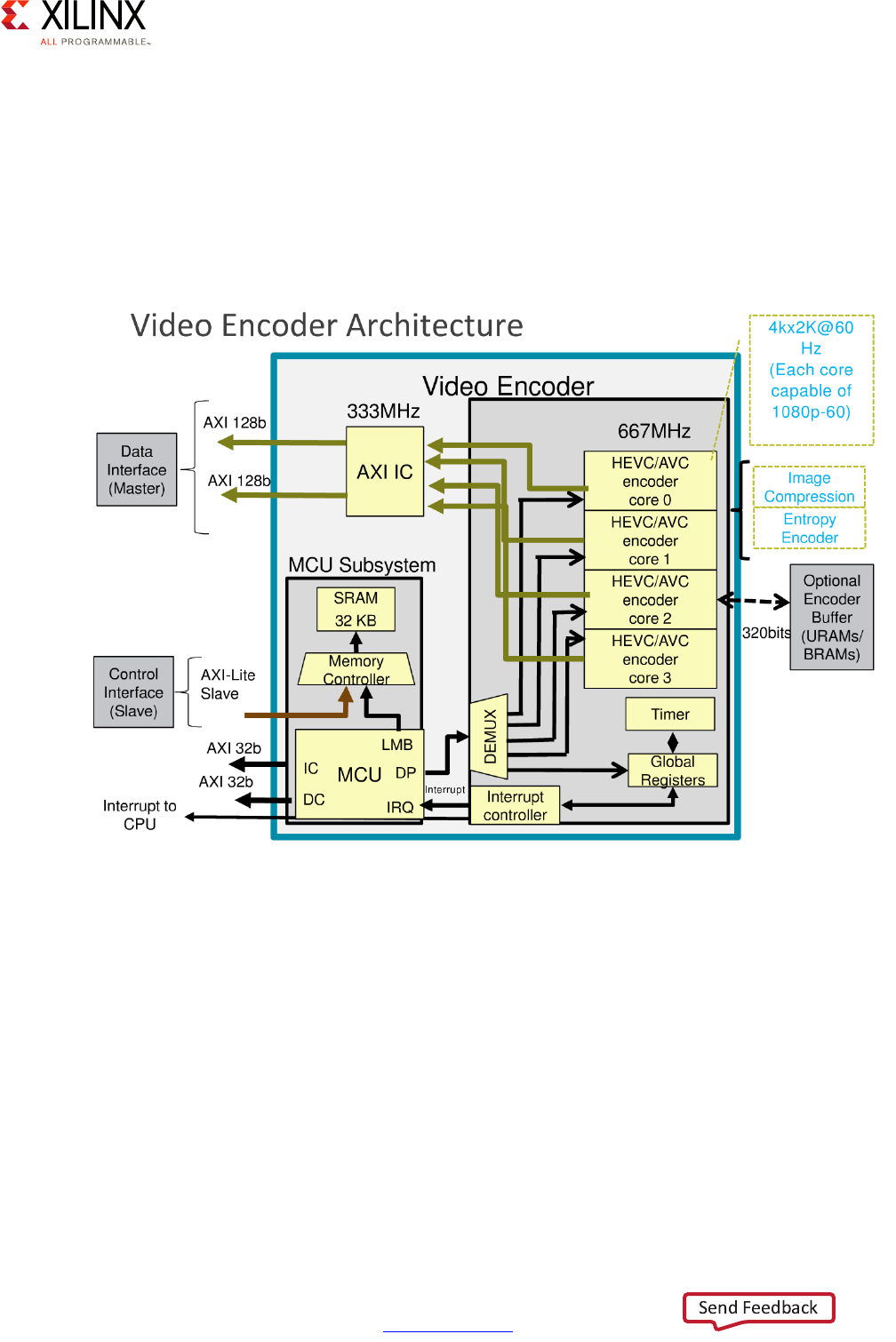

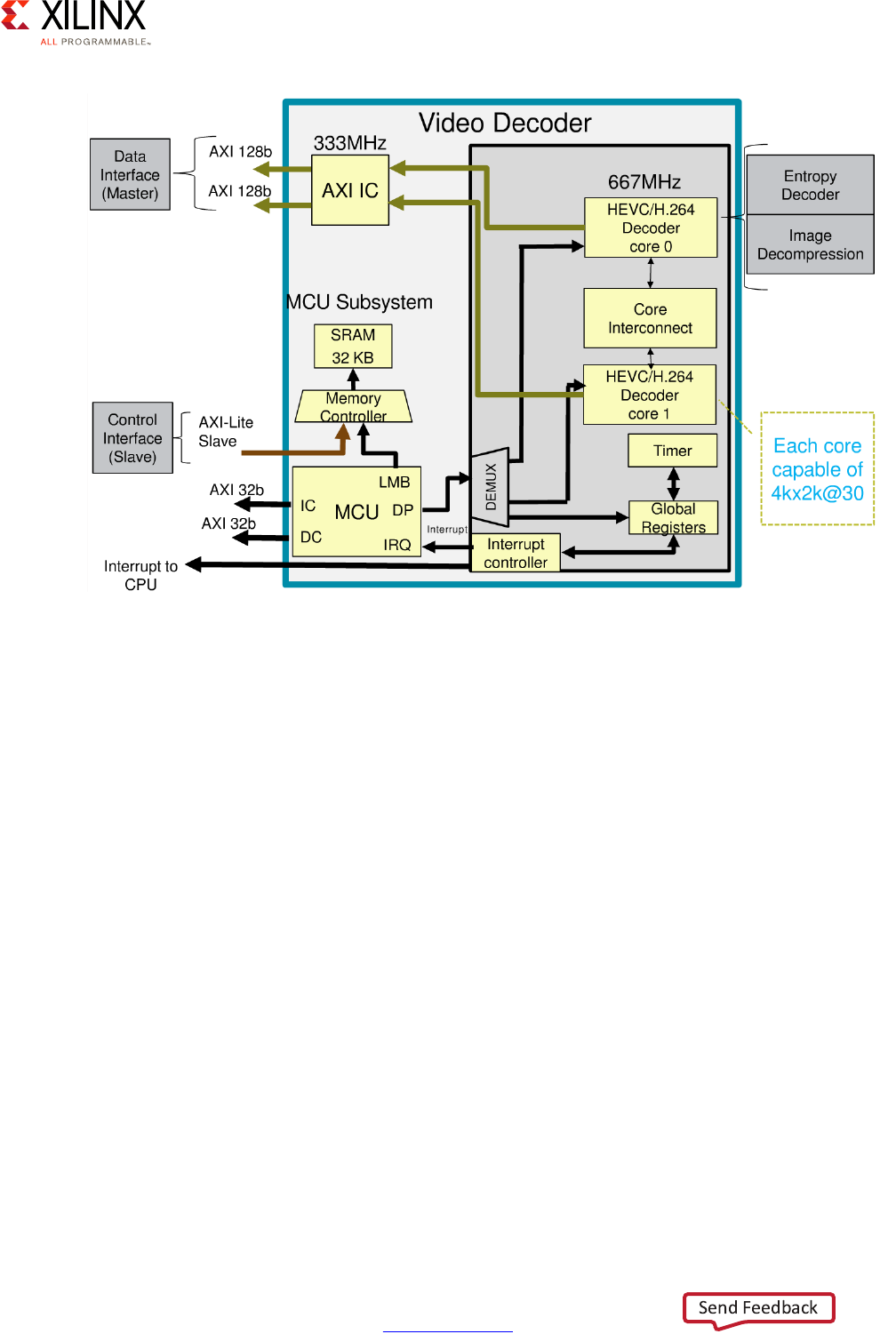

VCU . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 181

Chapter 10: Peripherals

Defining Your Peripherals Needs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 187

Peripherals Methodology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 188

GPIO . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 191

I2C . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 194

SPI . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 195

UART . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 196

CAN Controller . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 197

NAND . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 201

SD/SDIO/eMMC . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 202

Quad-SPI . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 203

Gigabit Ethernet Controller . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 206

USB . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 209

PCI Express . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 211

SATA . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 213

DisplayPort . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 214

Appendix A: Additional Resources and Legal Notices

Xilinx Resources . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 215

Solution Centers. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 215

Documentation Navigator and Design Hubs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 215

References . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 216

Please Read: Important Legal Notices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 217

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 6

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 1

Introduction

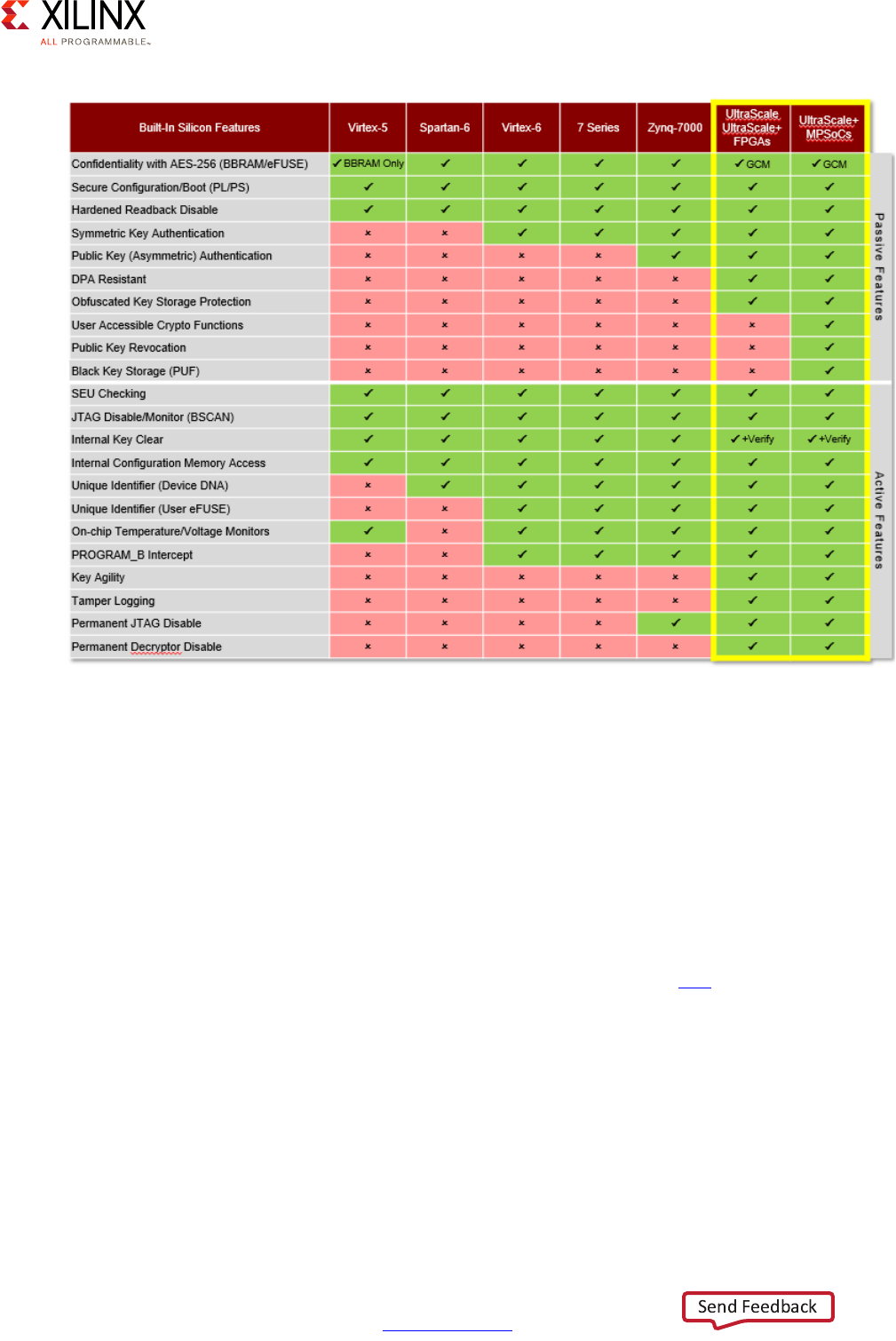

The Zynq® UltraScale+™ MPSoC platform offers designers the first truly all-programmable,

heterogeneous, multiprocessing system-on-chip (SoC) device. Smart systems are increasing

in complexity with applications in the automotive industry, large database deployments,

and even space exploration, pushing the requirements of each new generation of SoC to its

limits. Requirements for increased power control, real-time applications, intensive graphical

capabilities, and processing power demand a platform with maximum flexibility. The Zynq

UltraScale+ MPSoC platform provides leading edge features that modern systems

designers demand.

Built on the next-generation 16 nm FinFET process node from Taiwan Semiconductor

Manufacturing Company (TSMC), the Zynq UltraScale+ MPSoC contains a scalable 32 or

64-bit multiprocessor CPU, dedicated hardened engines for real-time graphics and video

processing, advanced high-speed peripherals, and programmable logic. The platform

delivers maximum scalability through either dual or quad-core APU devices, offloading of

critical applications like graphics and video pipelining to dedicated processing blocks, and

the ability to turn blocks on and off through efficient power domains and gated power

islands. With a wide range of interconnect options, digital signal processing (DSP) blocks,

and programmable logic choices, the Zynq UltraScale+ MPSoC has the flexibility to fit a

diverse set of user application requirements.

This guide can be viewed as a toolbox for making decisions for a user design with respect

to the UltraScale+ MPSoC feature set. Please review the recommendations and trade-offs

carefully when determining what works best for your product. This guide is organized

around the Vector Methodology (described in Vector Methodology) to provide an initial

diagrammatic view of system design requirements mapped against the Zynq UltraScale+

MPSoC primary features. The Vector Methodology does not ensure maximum use of the

capabilities of the platform, but rather provides a graphical representation of the trade-offs

and solutions a user can make when building their product on the platform. This can result

in the de-prioritization of certain platform features within the context of the overall

solution. Using the Vector Methodology allows designers to accurately target other team

members, such as Hardware Designers, Architects, and Software Engineers, to the relevant

sections of the methodology applicable to their roles.

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 7

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 1: Introduction

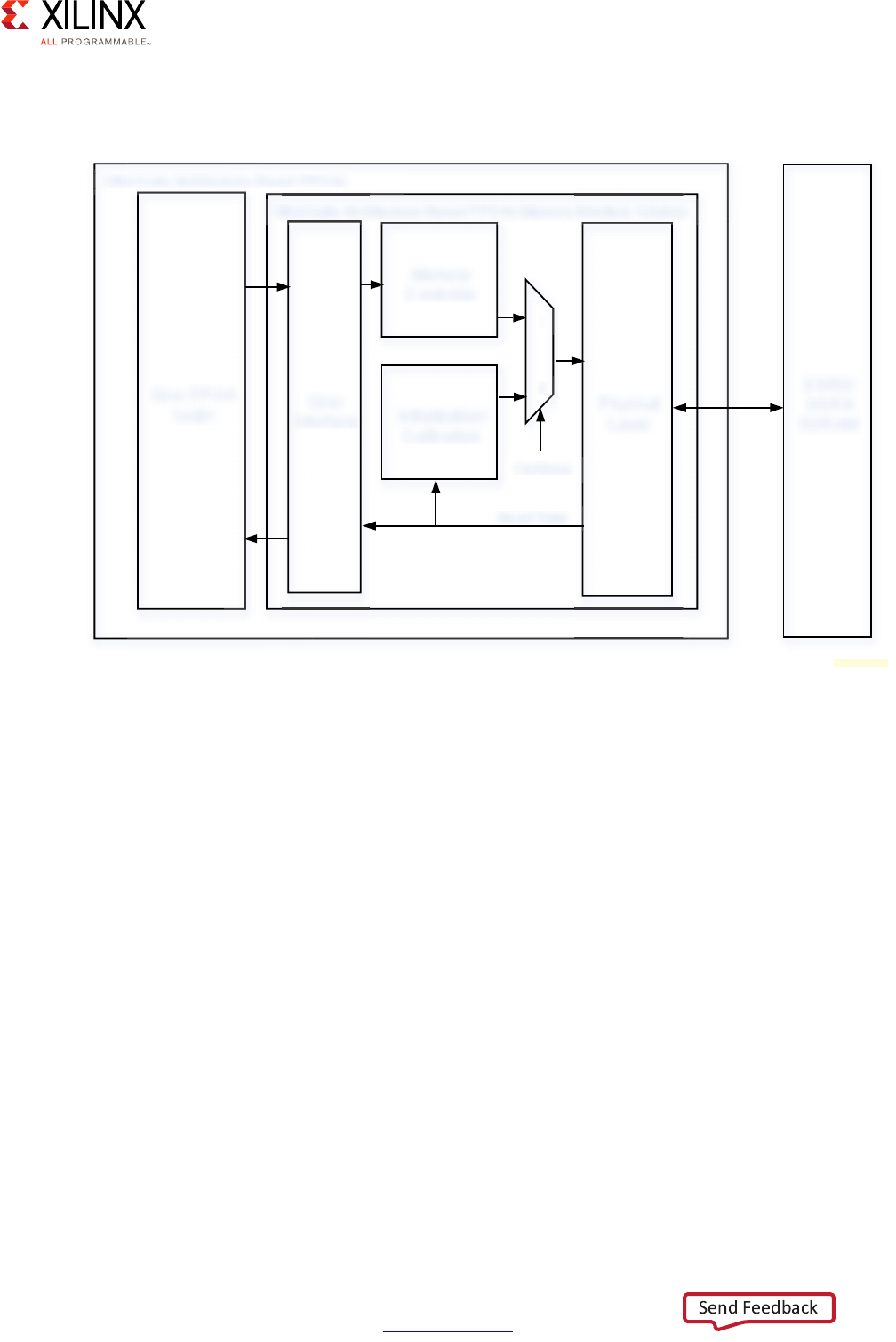

Block Diagram

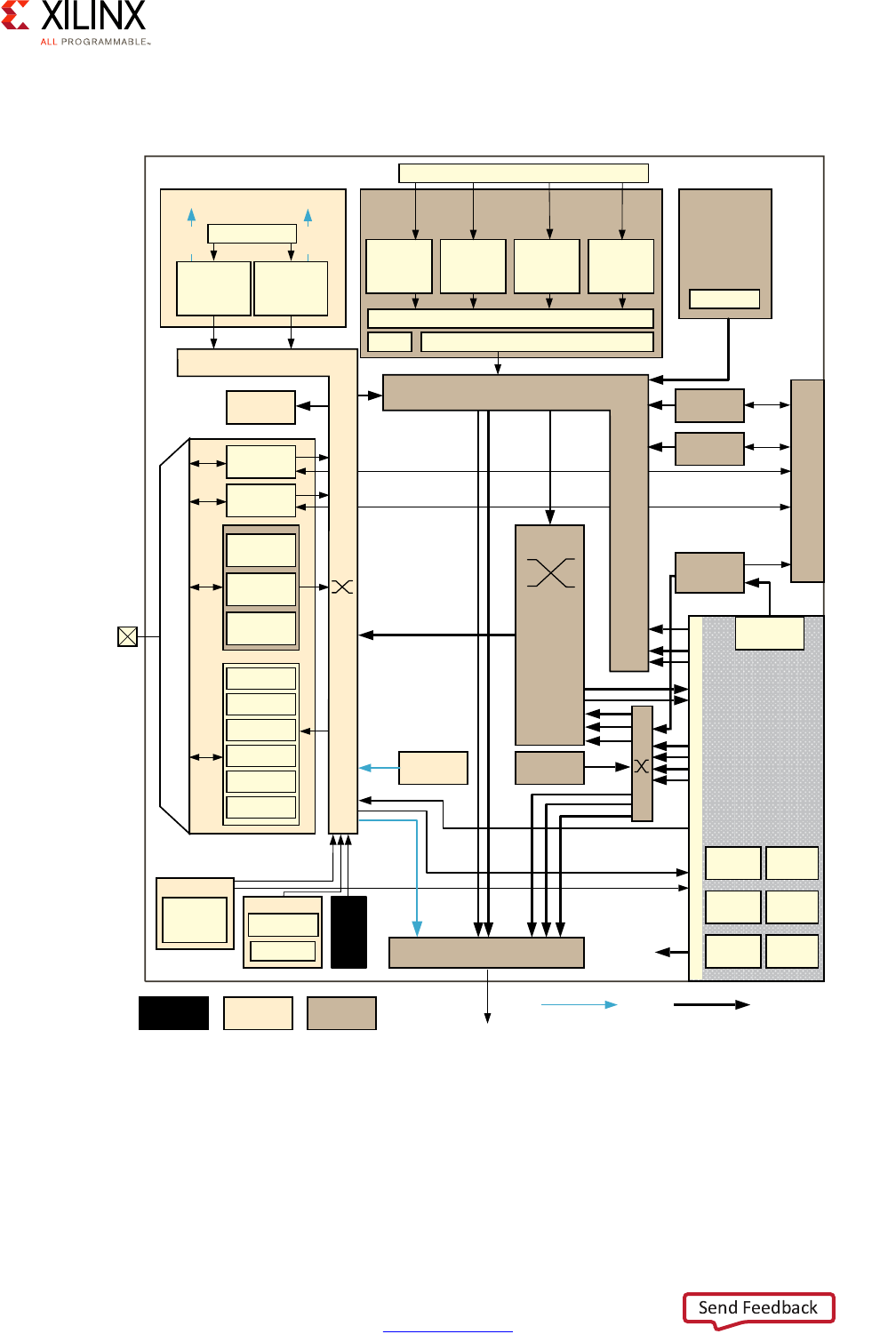

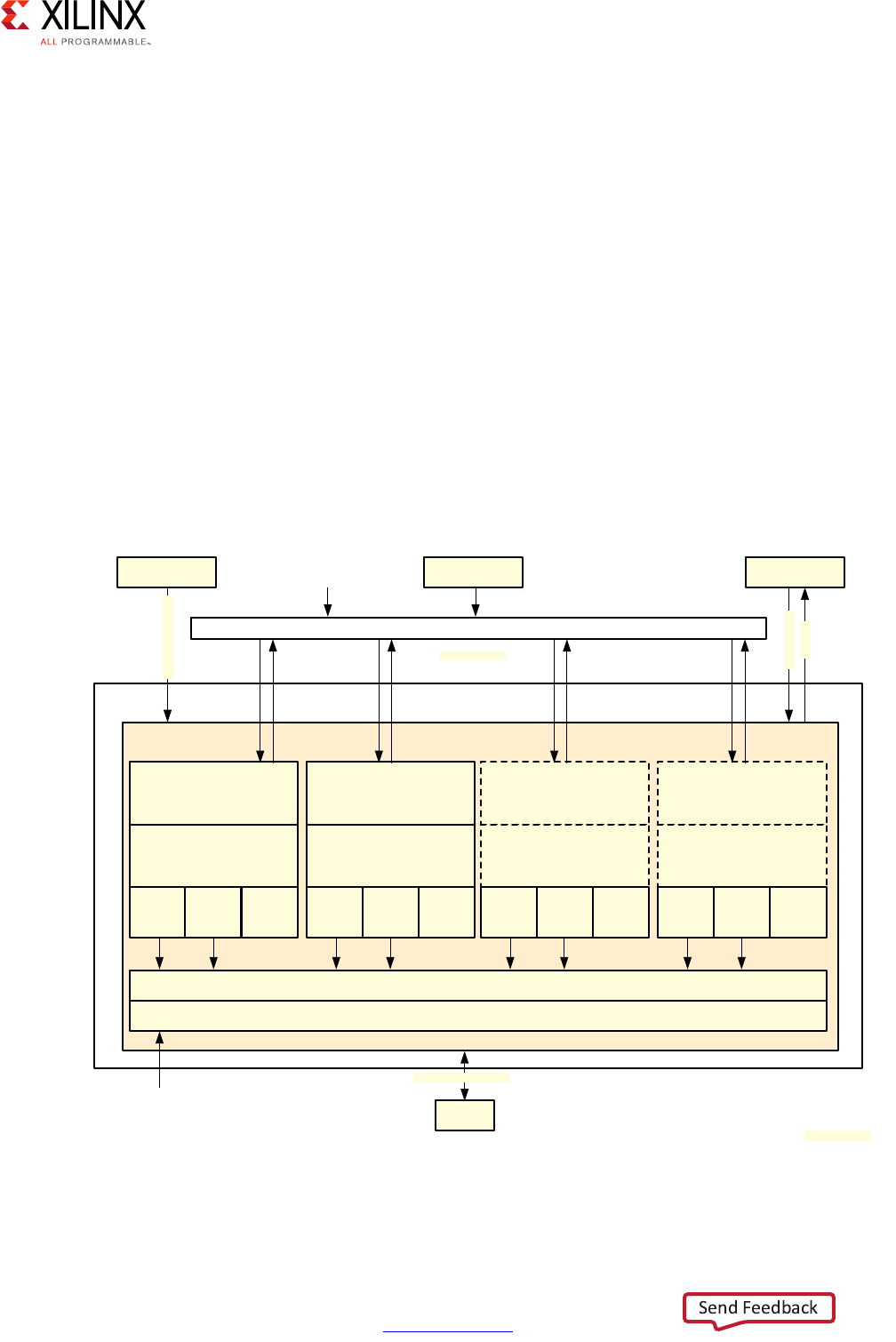

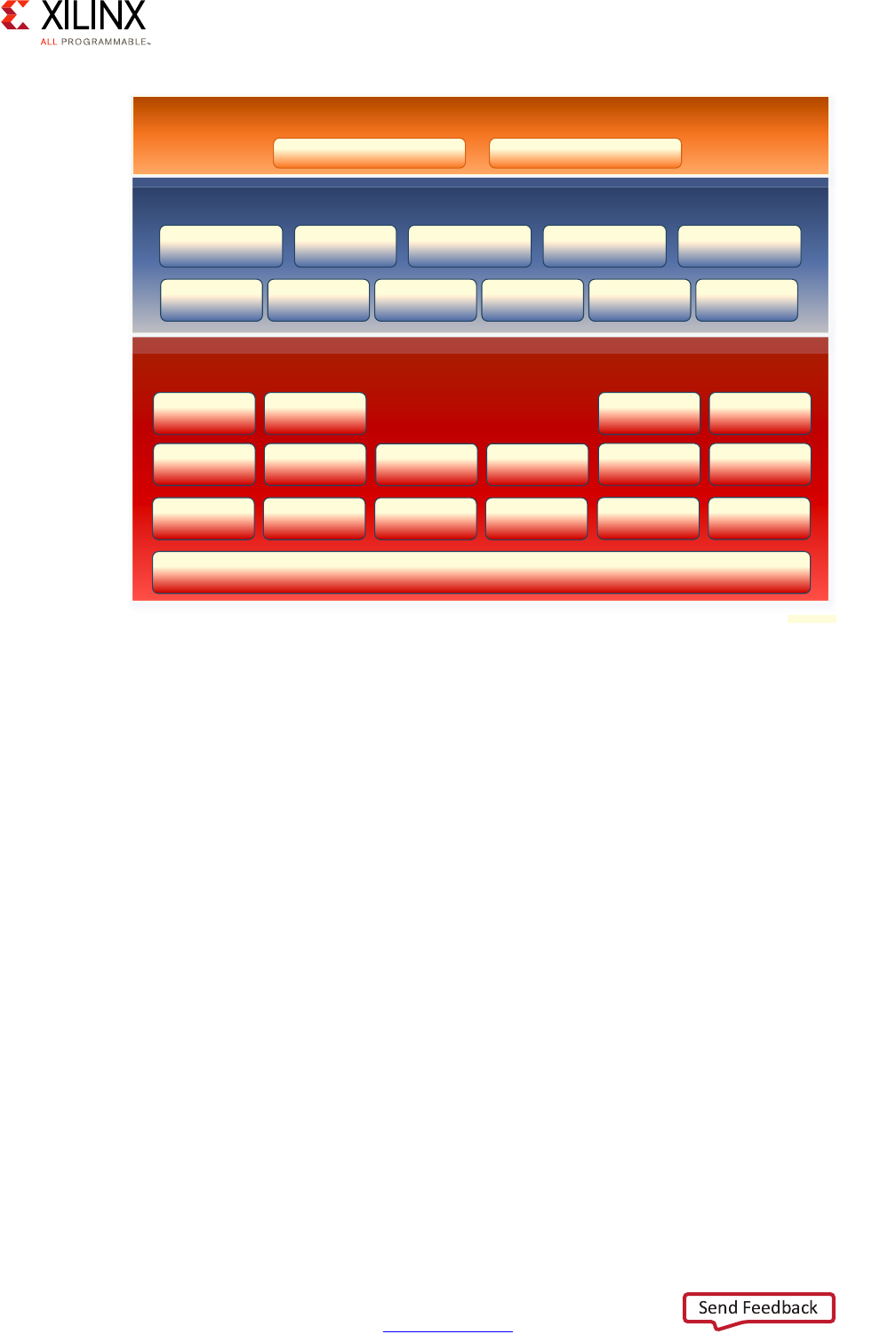

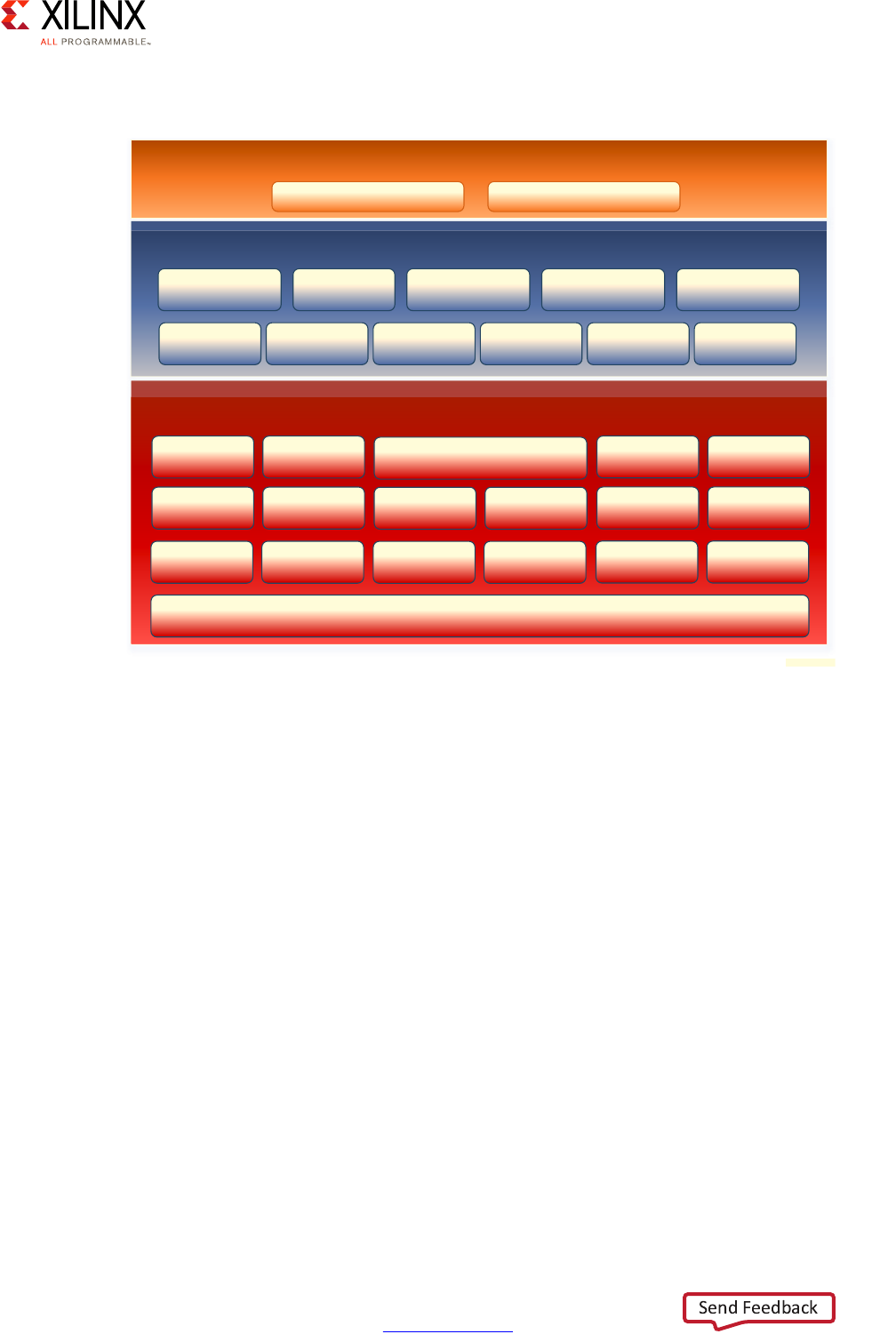

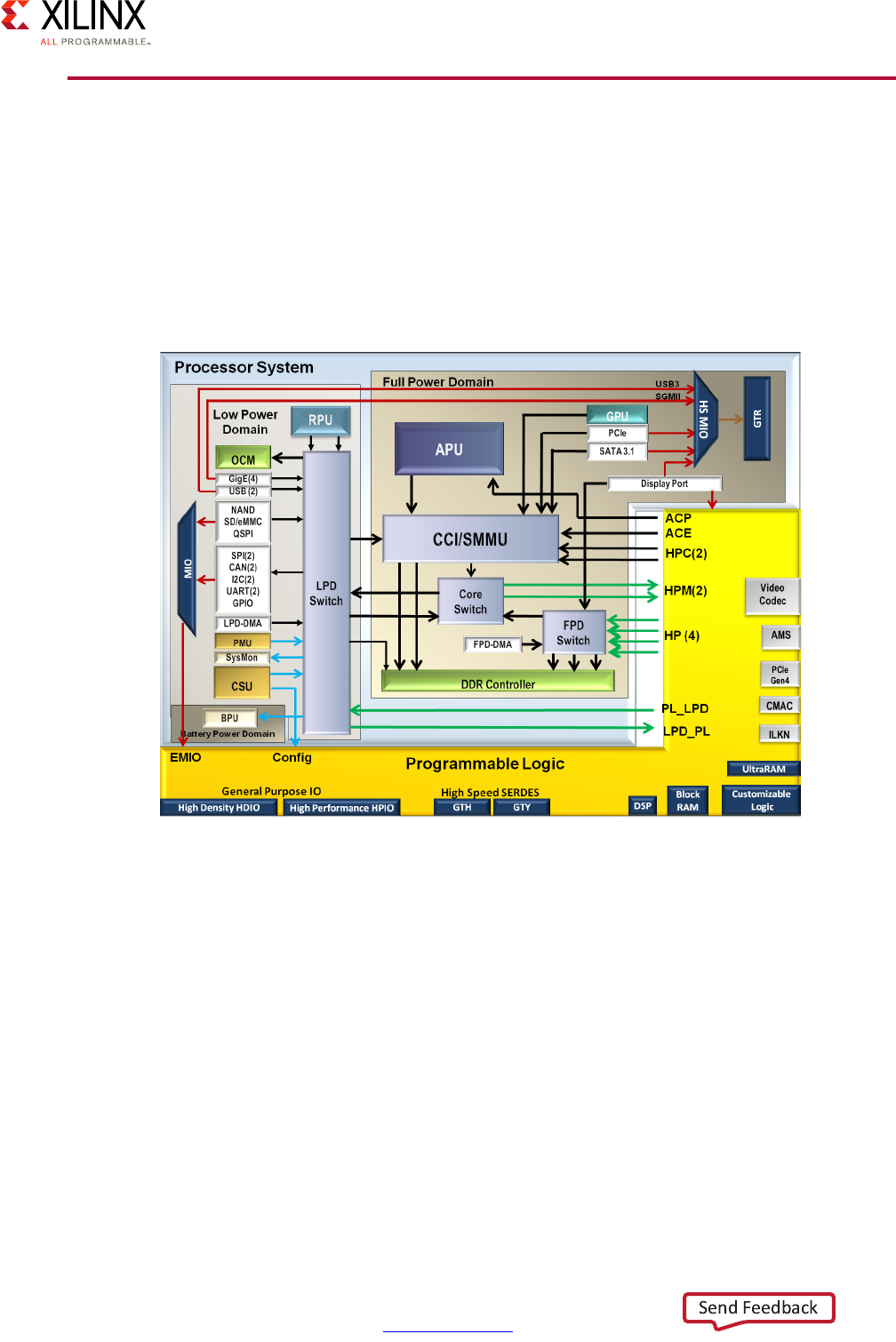

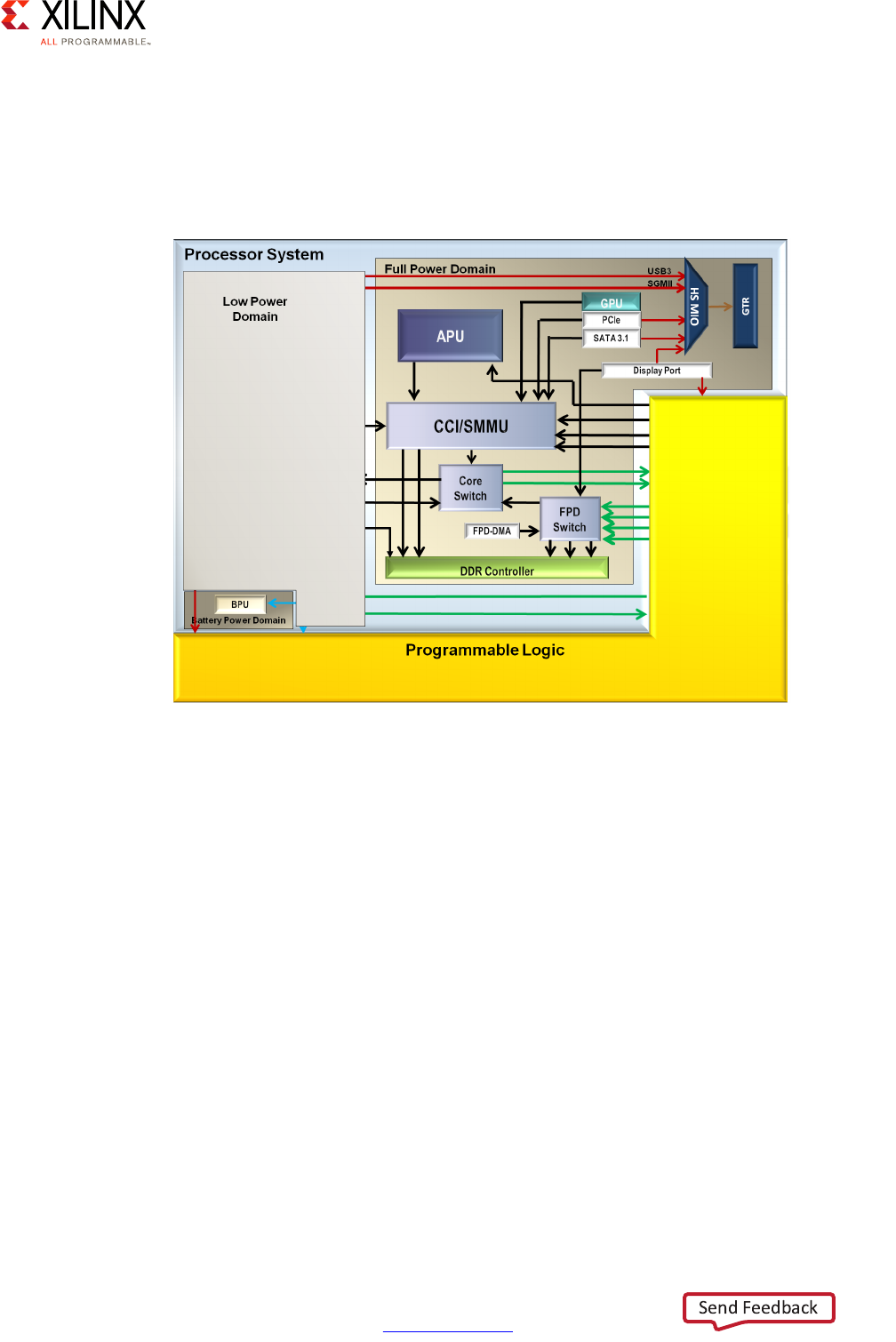

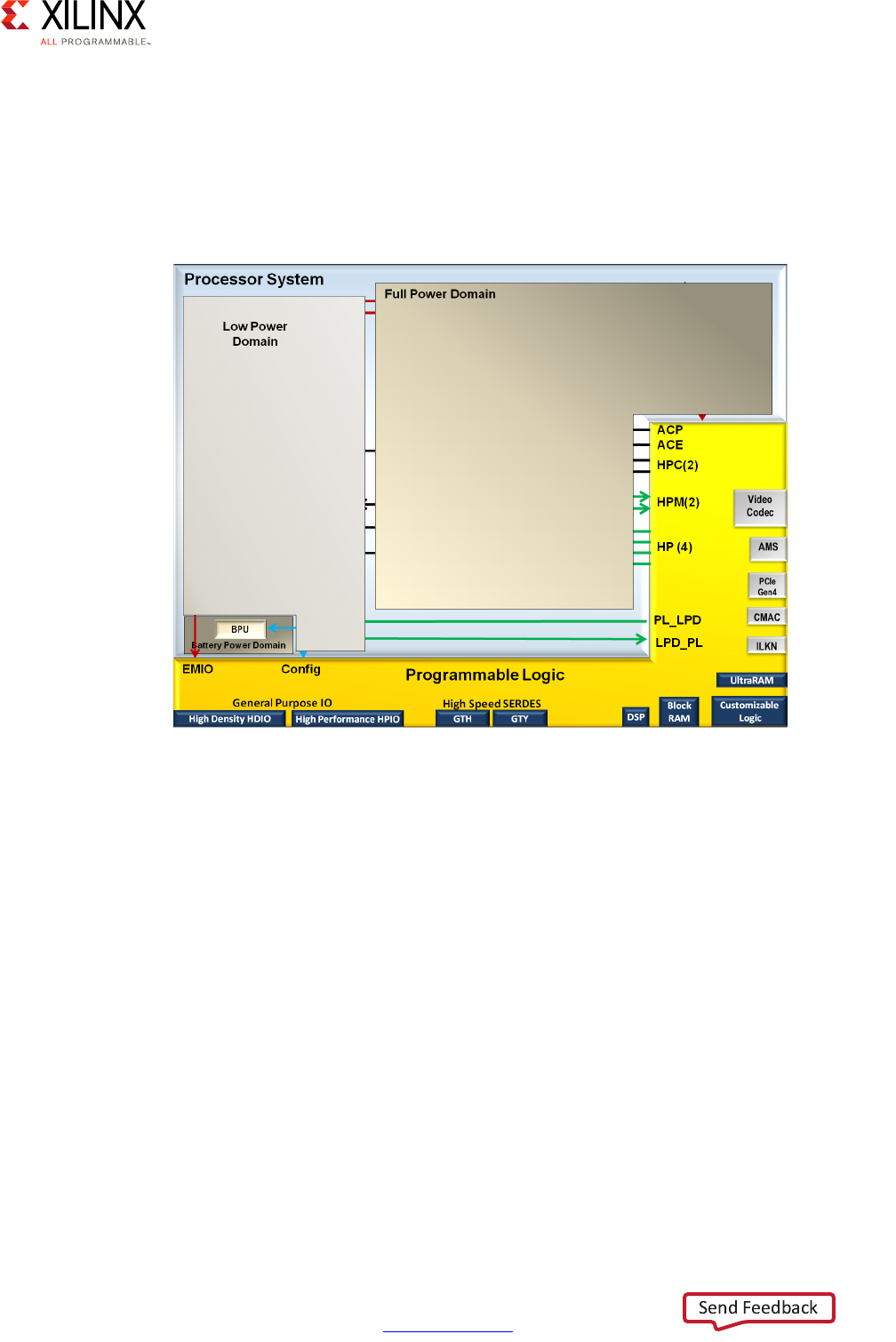

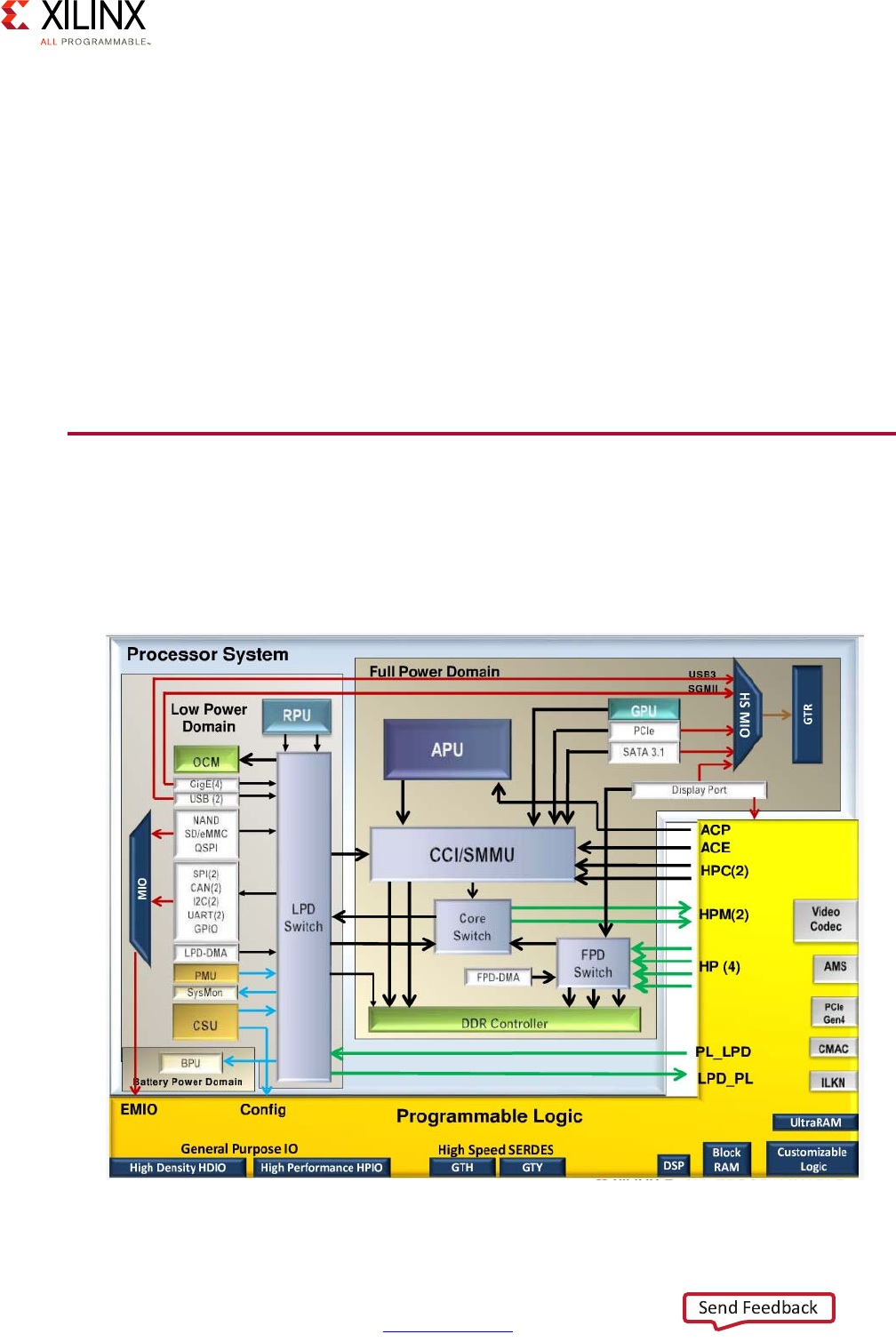

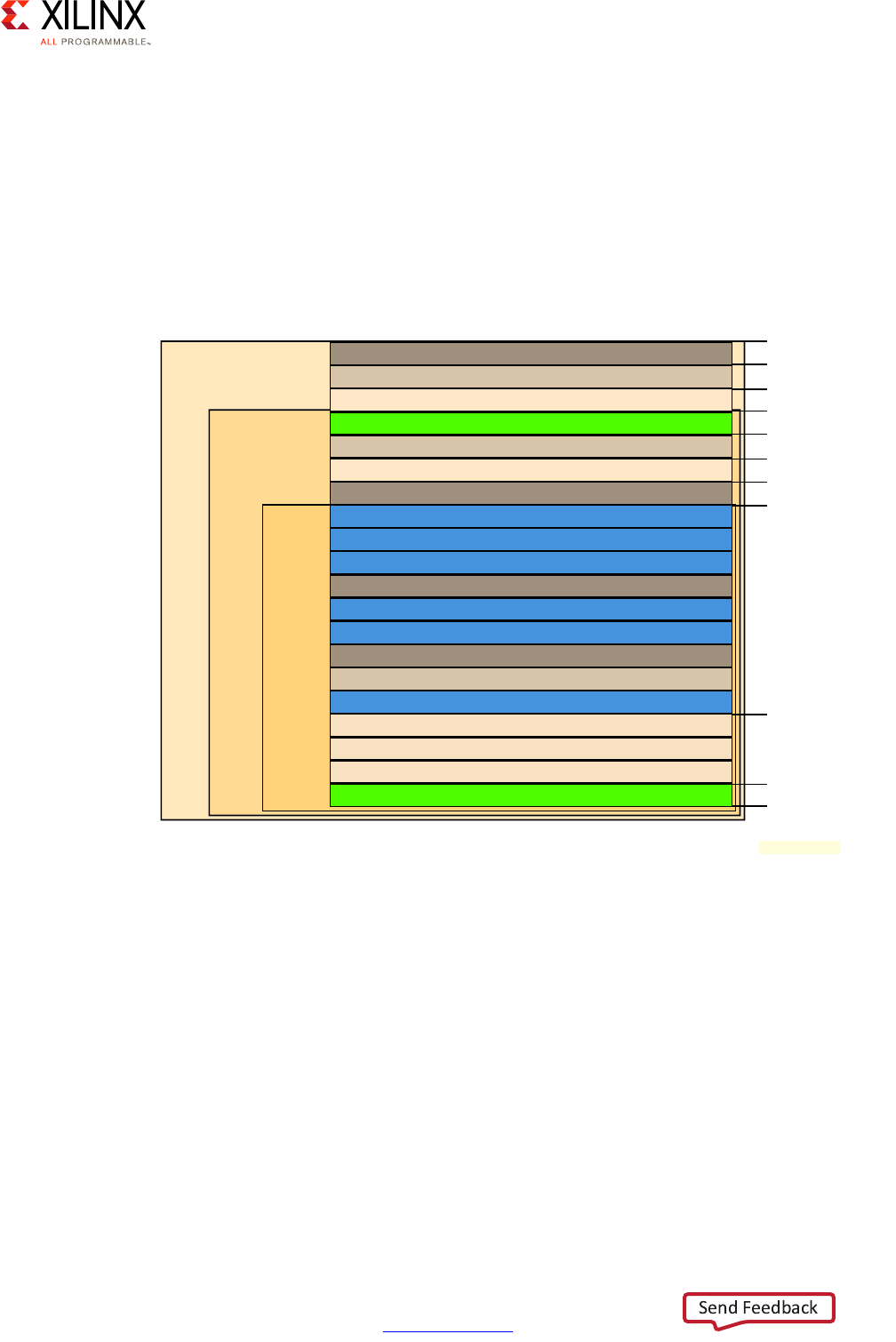

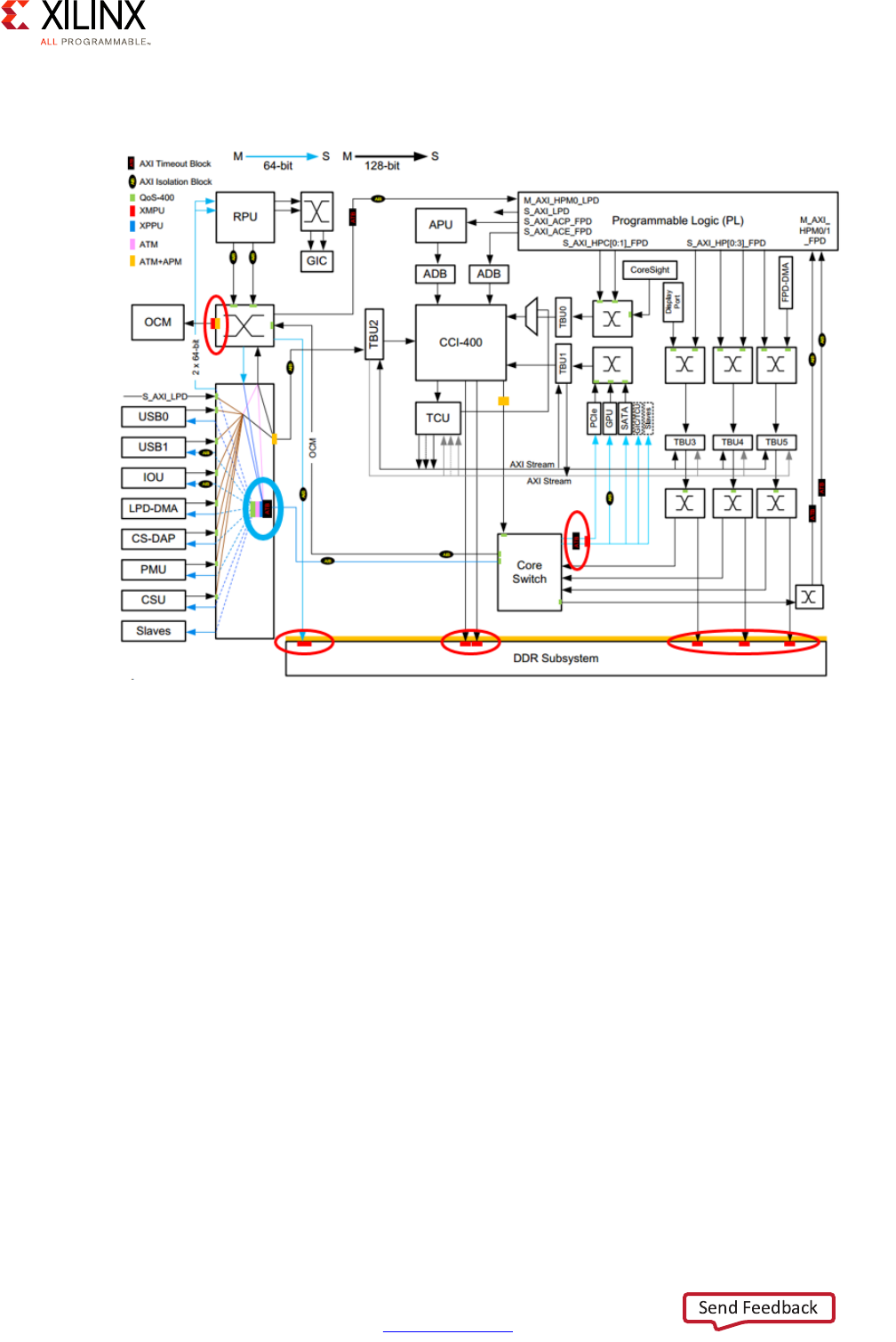

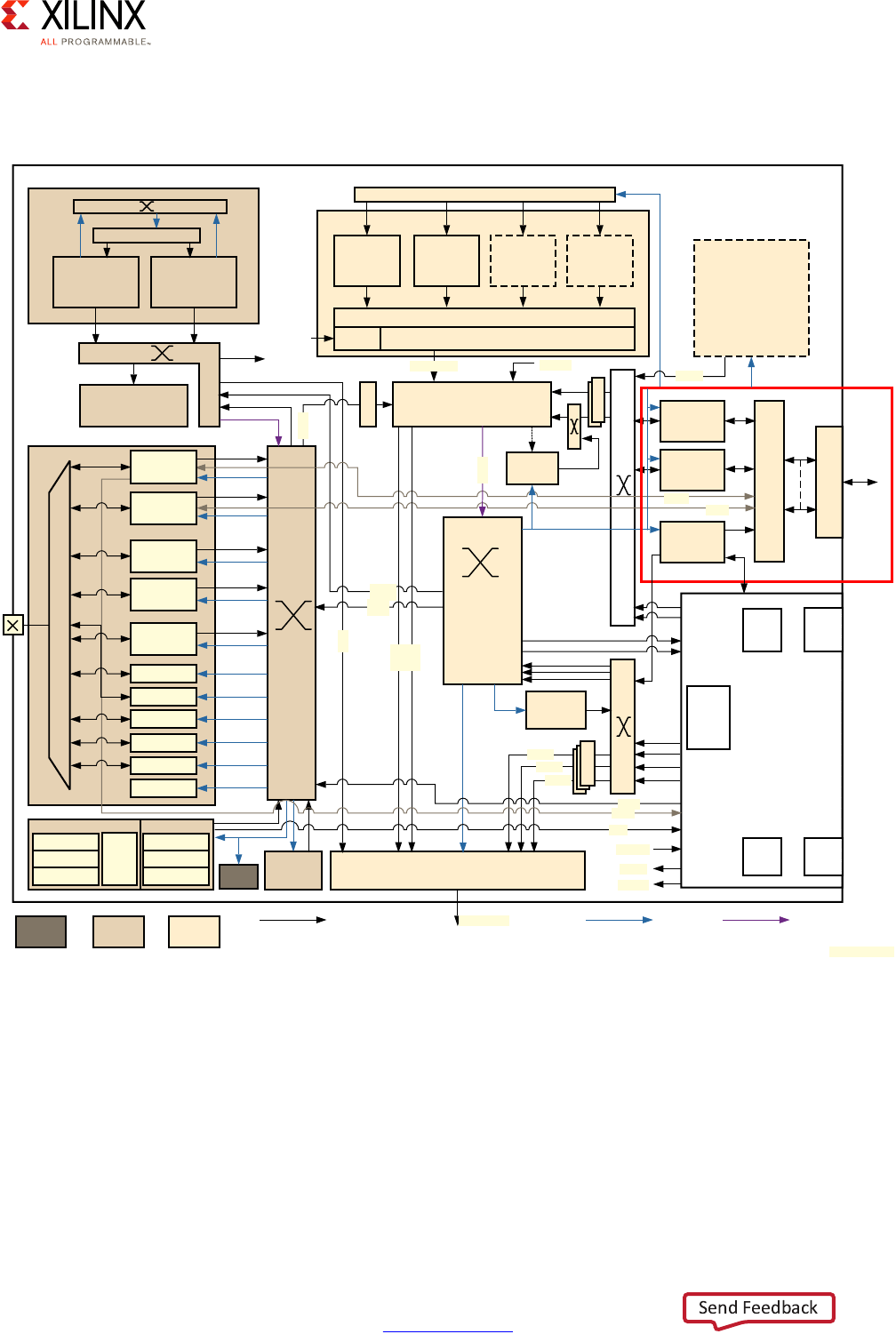

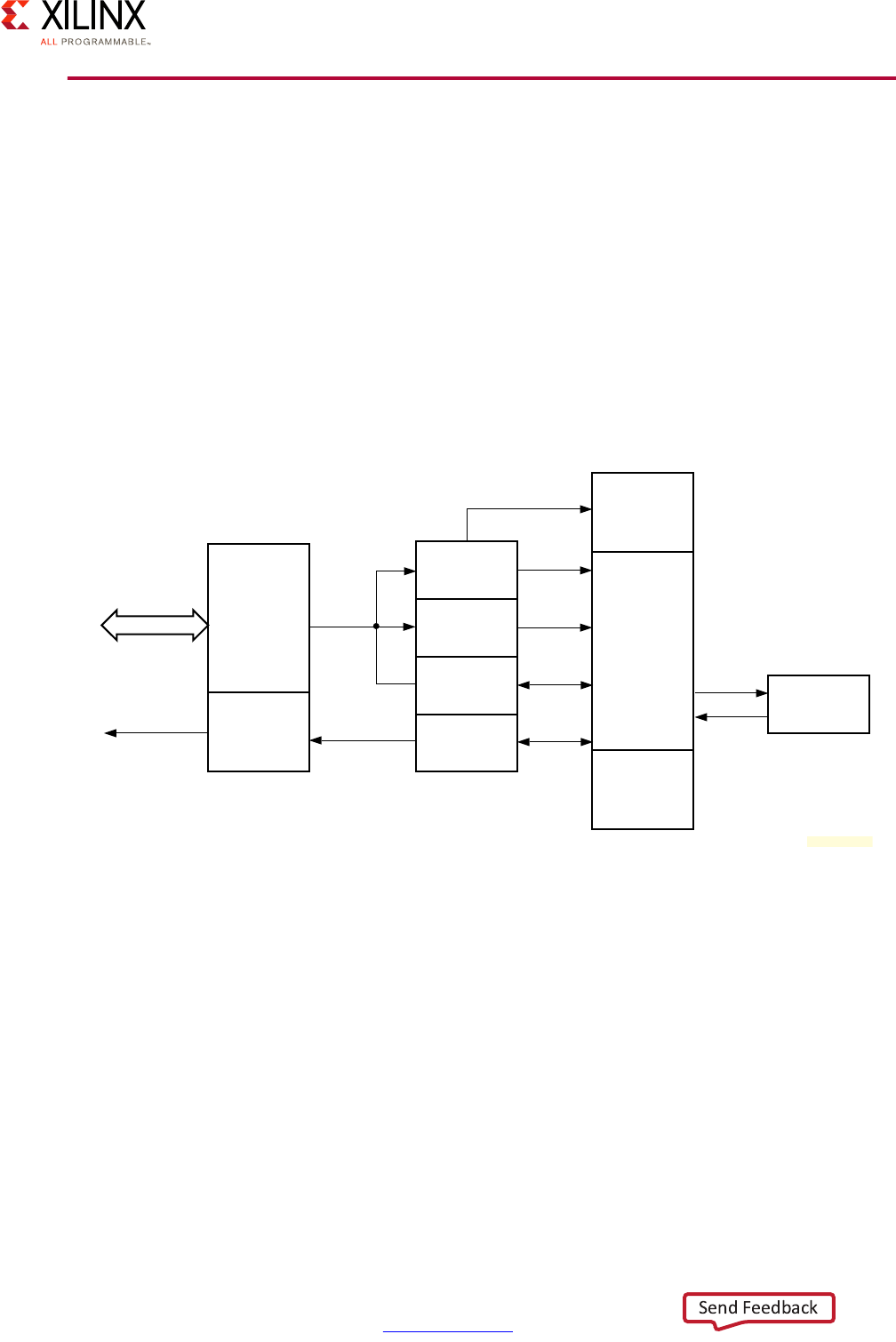

A Zynq UltraScale+ MPSoC device consists of two major underlying processing system (PS)

and programmable logic (PL) blocks in two isolated power domains.

PS acts as one standalone MPSoC device and is able to boot and support all the features

shown in Figure 1-1, page 8 without powering on the PL. Each of the individual embedded

blocks are covered in this manual.

The Zynq UltraScale+ MPSoC device has four different power domains:

•Low-power domain (LPD)

• Full-power domain (FPD)

• PL power domain (PLPD)

• Battery power domain (BPD)

Each power domain can be individually isolated. The platform management unit (PMU) on

the LPD facilitates the isolation of each of the power domains. Since each power domain

can be individually isolated, functional isolation (an important aspect of safety and security

applications) is possible. Additionally, the isolation can be automatically turned on when

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 8

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 1: Introduction

one of the power supplies of the corresponding power domain unintentionally powers

down.

X-Ref Target - Figure 1-1

Figure 1-1: Block Diagram

RPU

256 KB

OCM

LPD-DMA

CSU

PMU

Processing System

Cortex-R5

32 KB I/D

128 KB TCM

Cortex-R5

32 KB I/D

128 KB TCM

4 x 1GE

APU

Cortex-A53

32 KB I/D

Cortex-A53

32 KB I/D

Cortex-A53

32 KB I/D

Cortex-A53

32 KB I/D

GIC

SCU

ACP 1 MB L2

GPU

Mali-400 MP2

64 KB L2

2 x USB 3.0

NAND x8

ONFI 3.1

2 x SD3.0/

eMMC4.51

Quad-SPI

x 8

2 x SPI

2 x CAN

2 x I2C

2 x UART

GPIOs

SYSMON

MIO

Central

Switch

FPD-DMA

VCU

H.264/H.265

PCIe

Gen4

DisplayPort

v1.2 x1, x2

2 x SATA

v3.0

PCIe Gen2

x1, x2, or x4

SHA3

AES-GCM

RSA

Processor

System BPU

DDRC (DDR4/3/3L, LPDDR3/4)

Programmable

Logic

128 KB RAM

PL_LPD HP

GIC

LLLP

LLLP

RGMII

ULPI

PS-GTR

SMMU/CCI

GFC

USB 3.0

SGMII

Low Power Switch

To ACP

Low Power Full Power

Battery

Power

32-bit/64-bit

64-bit

MS

128-bit

MS

UG1085_c1_01_091715

LPD_PL HPCHPM

GTY

Quad

GTH

Quad

Interlaken 100G

Ethernet

ACE

DisplayPort

Video and

Audio Interface

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 9

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 1: Introduction

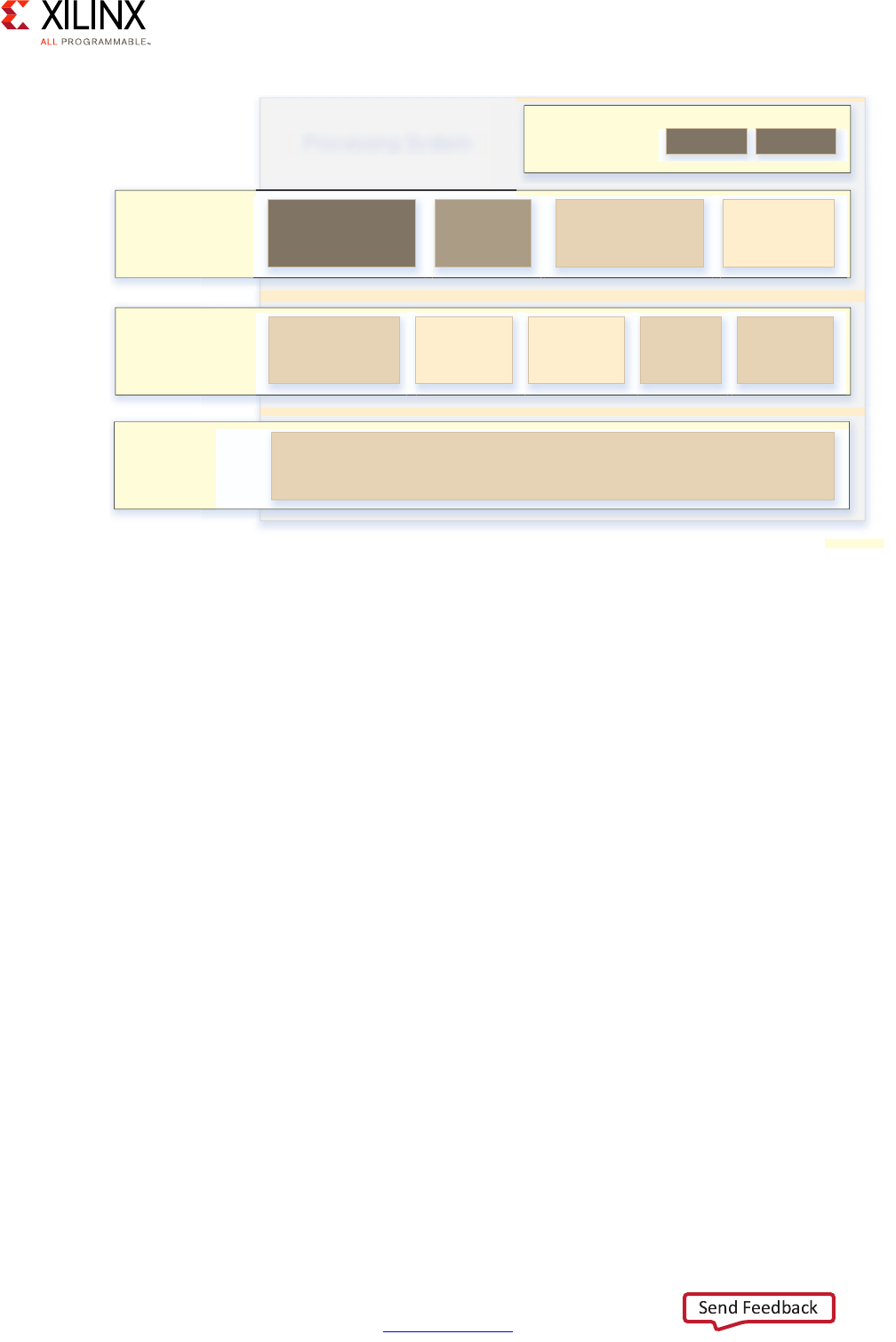

Vector Methodology

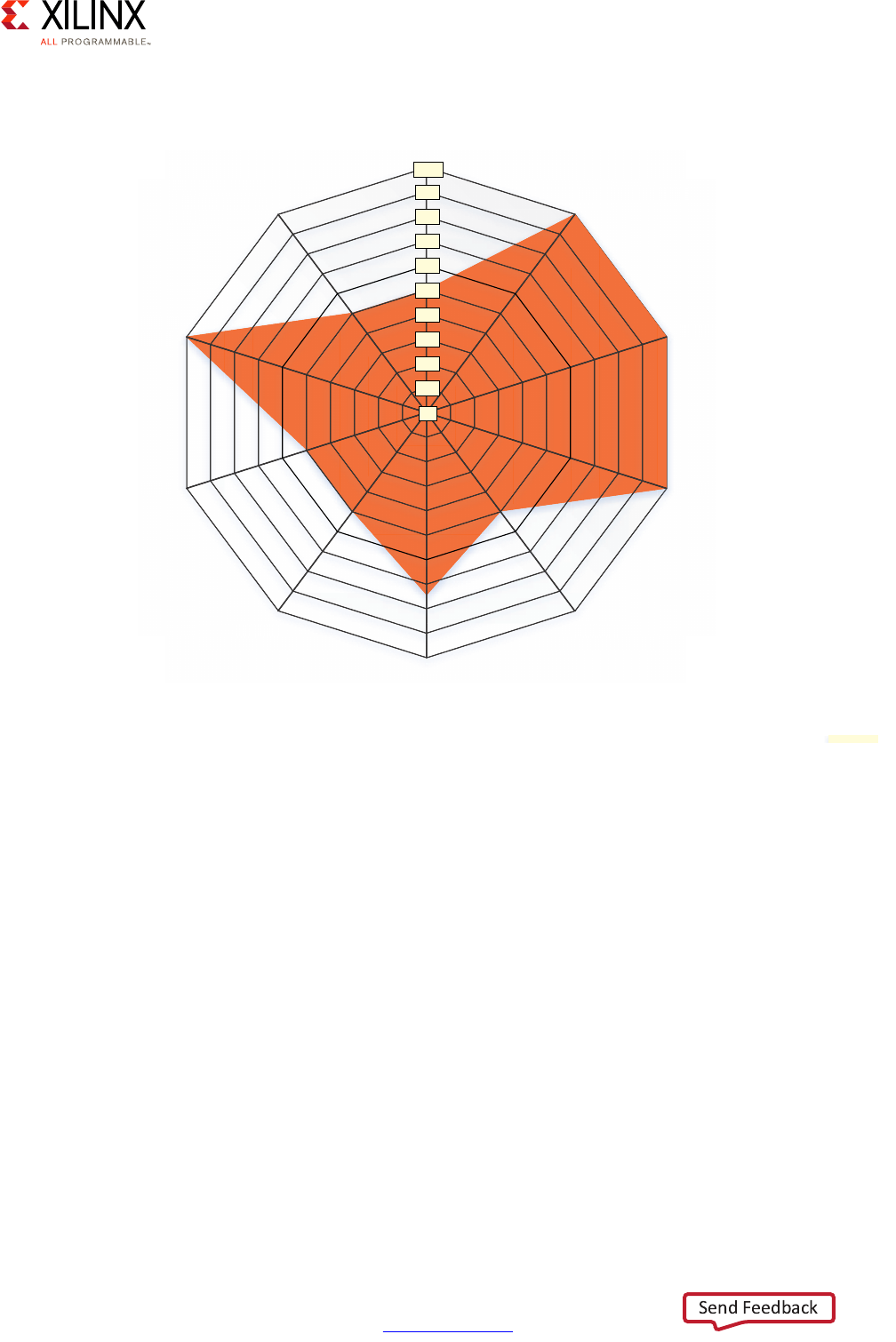

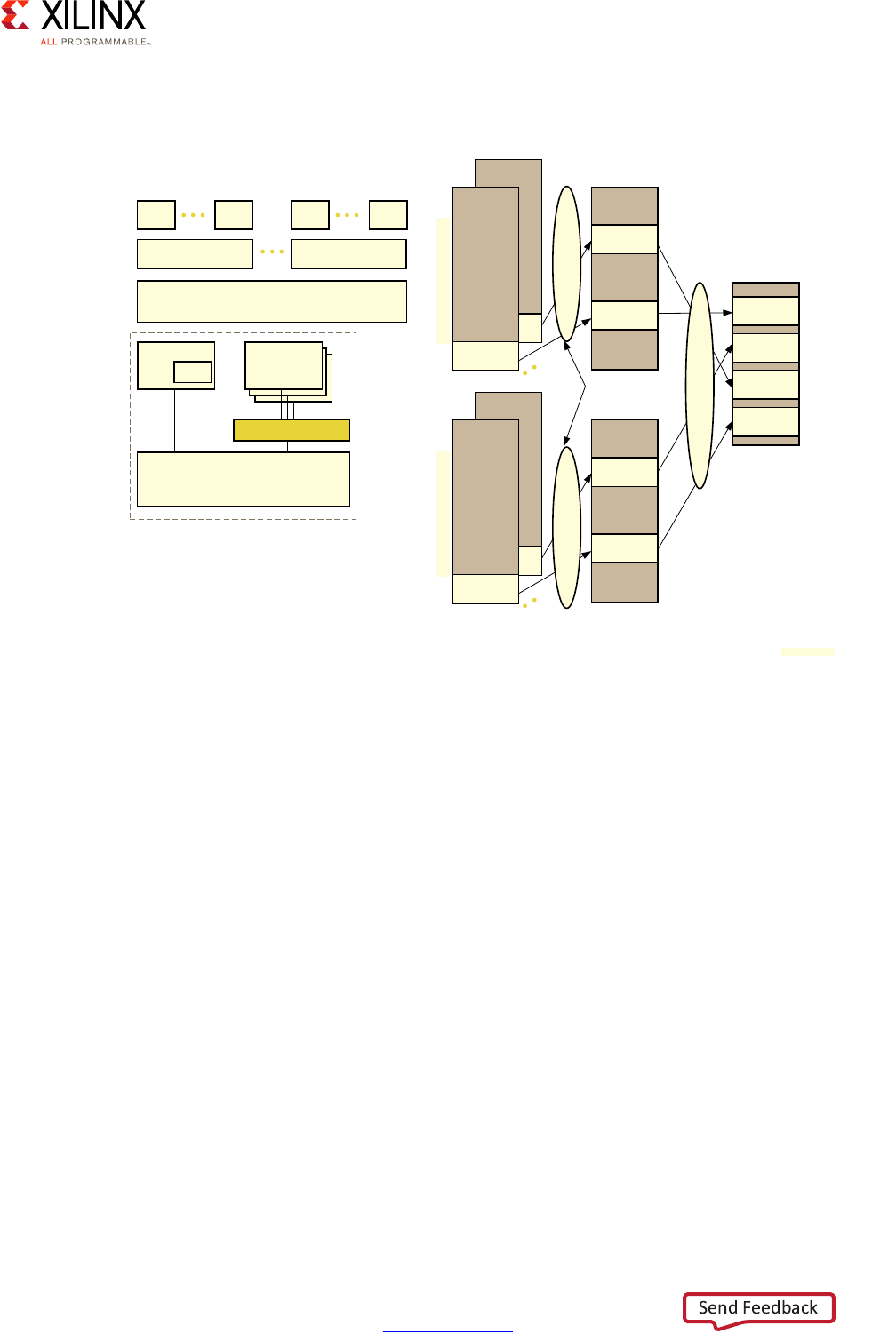

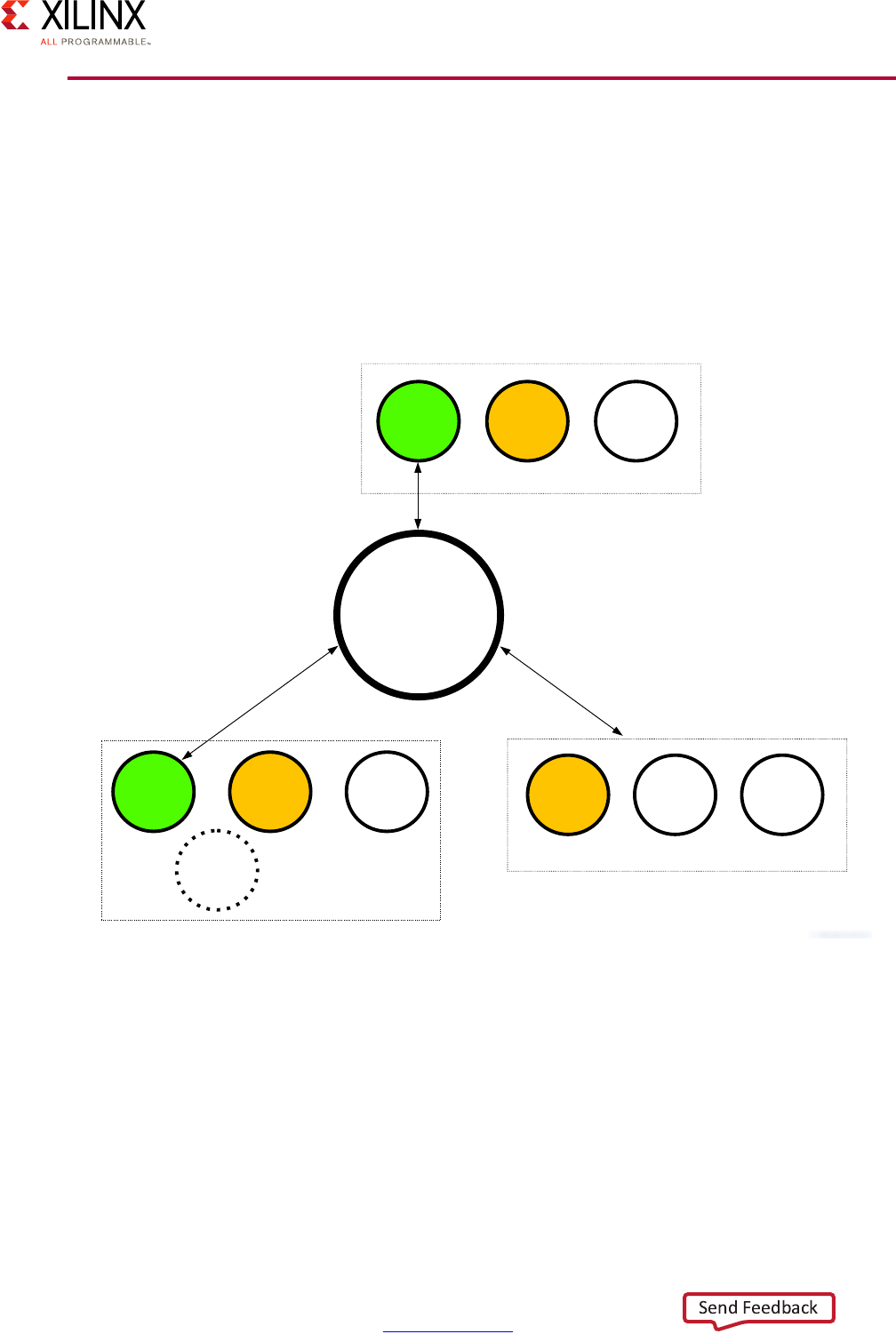

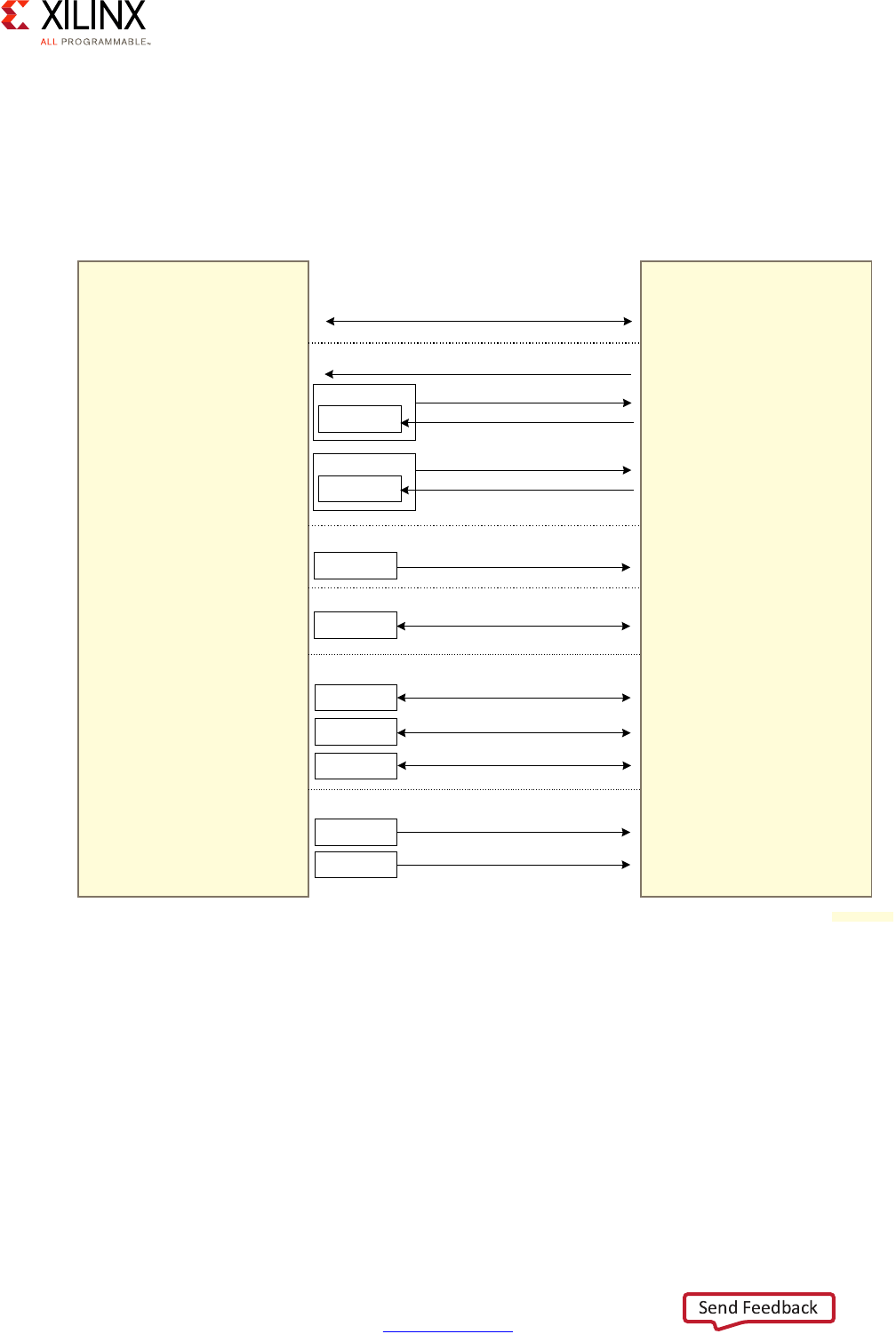

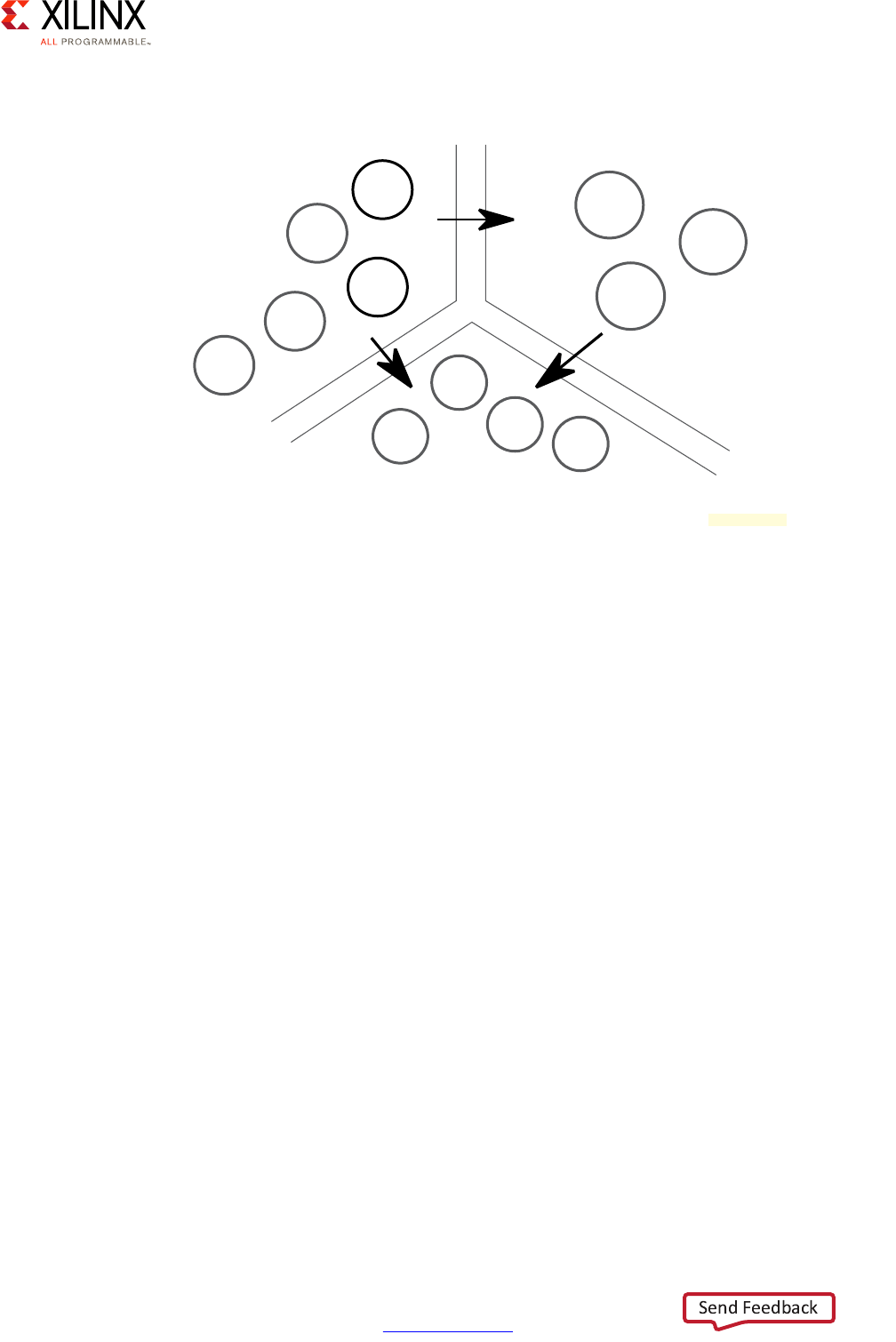

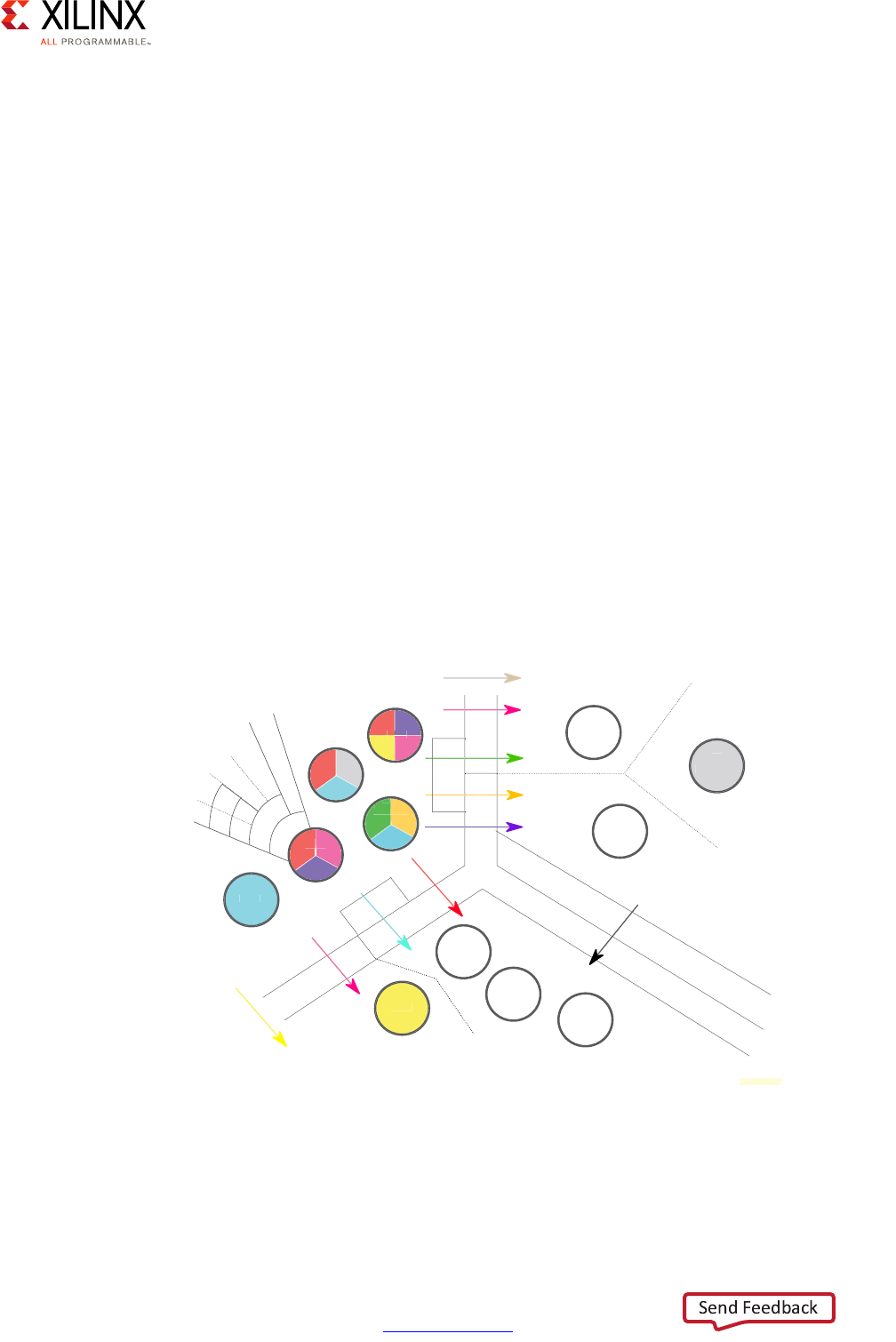

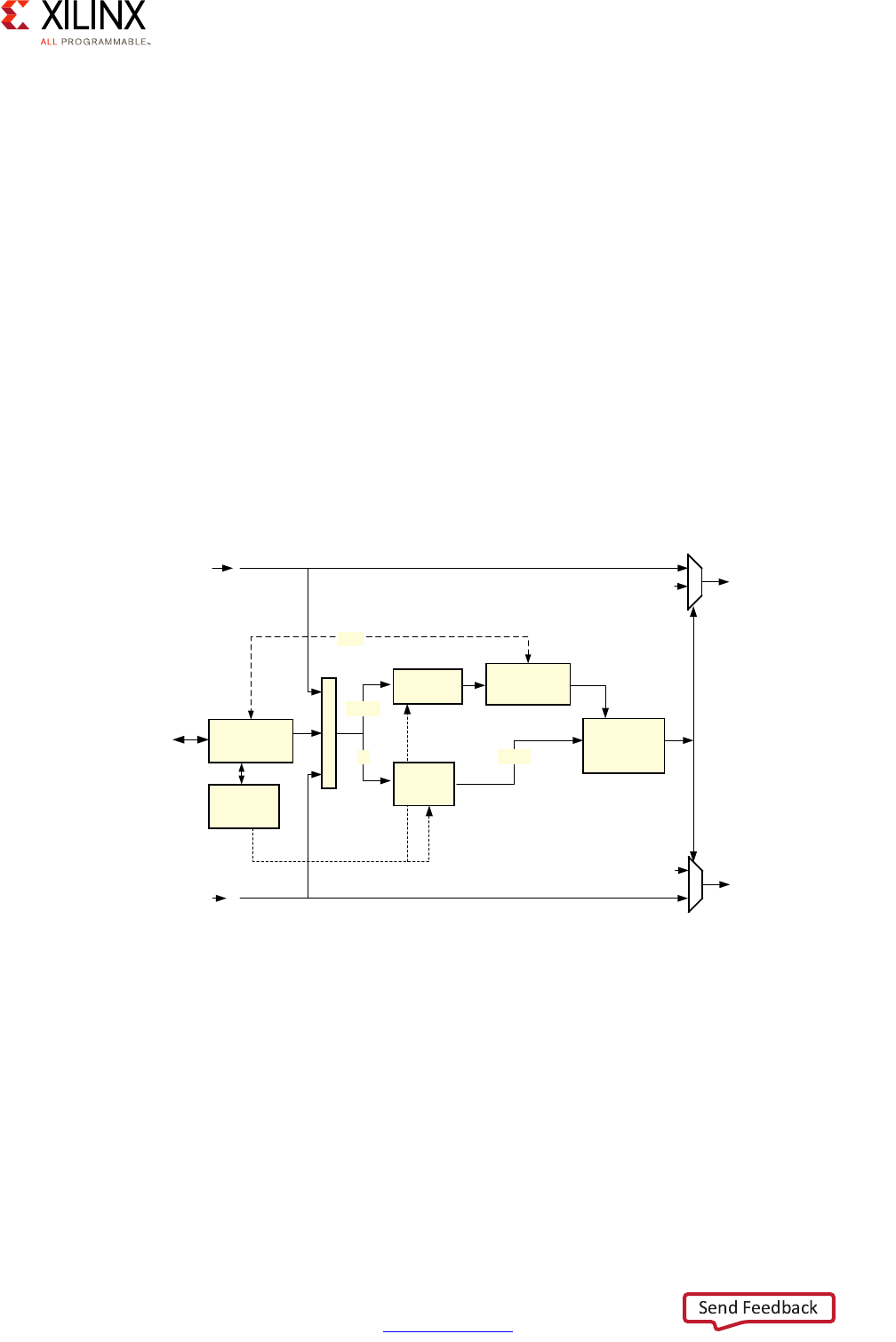

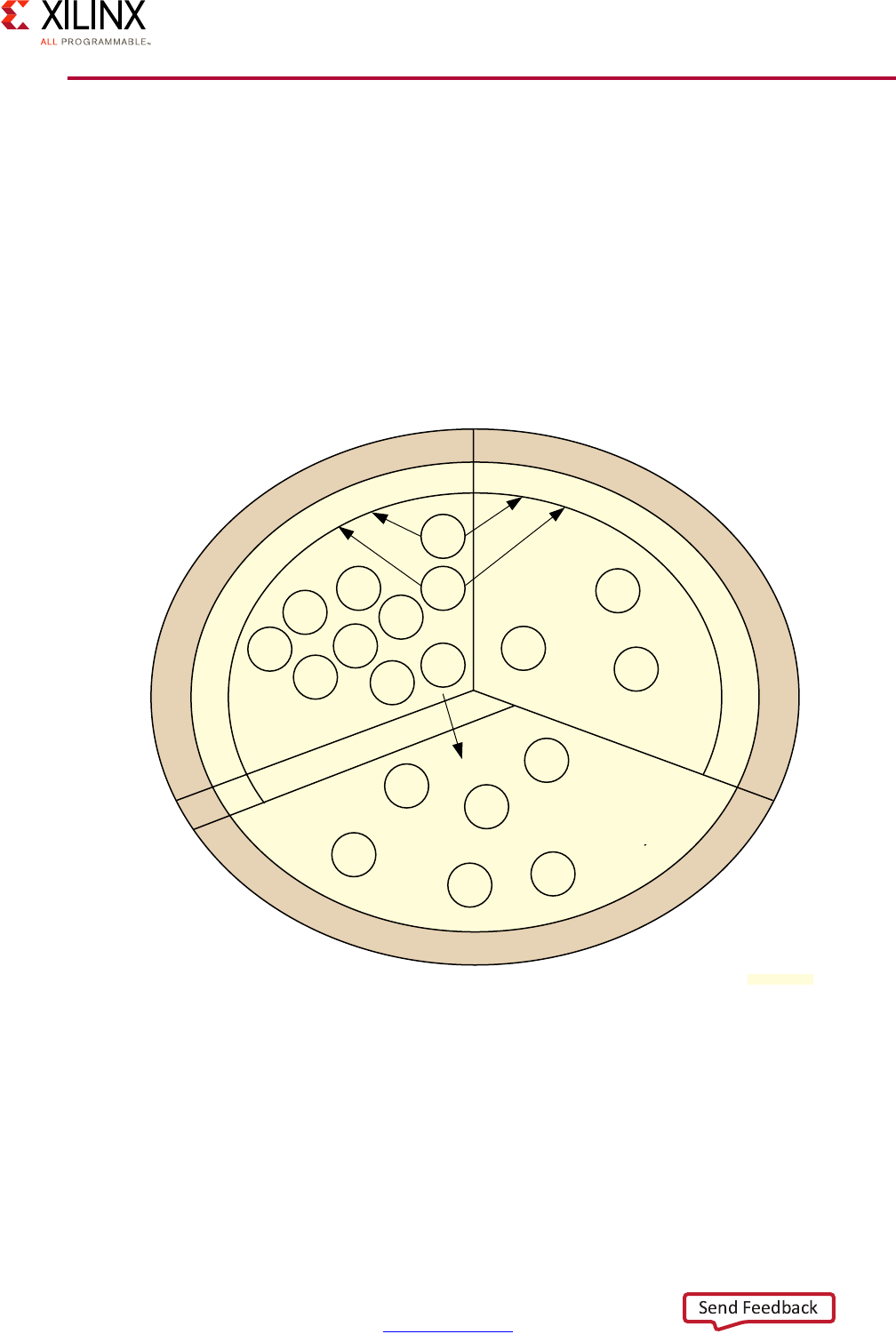

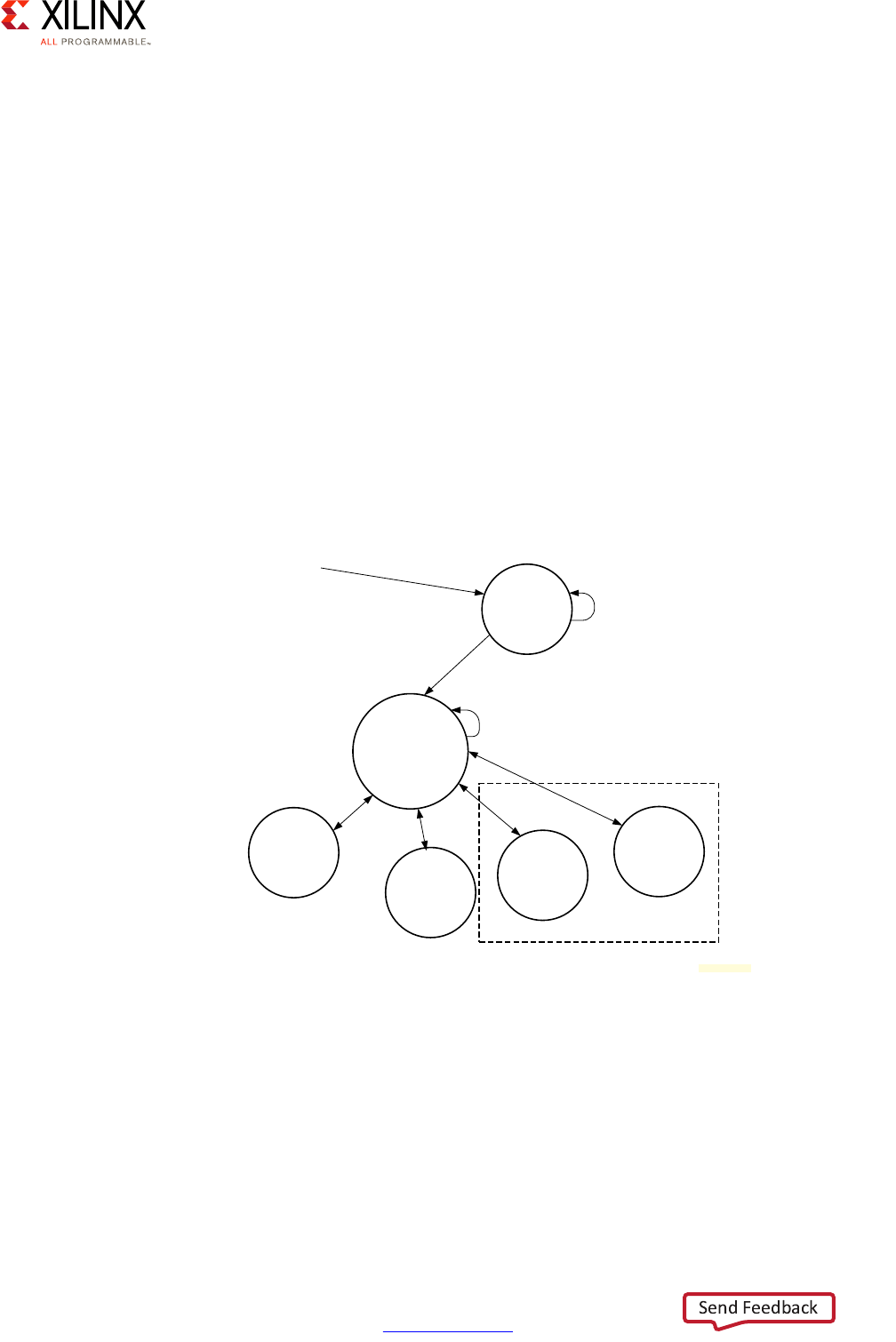

The Vector Methodology, as applied to the Zynq UltraScale+ MPSoC device, is described by

the following diagram:

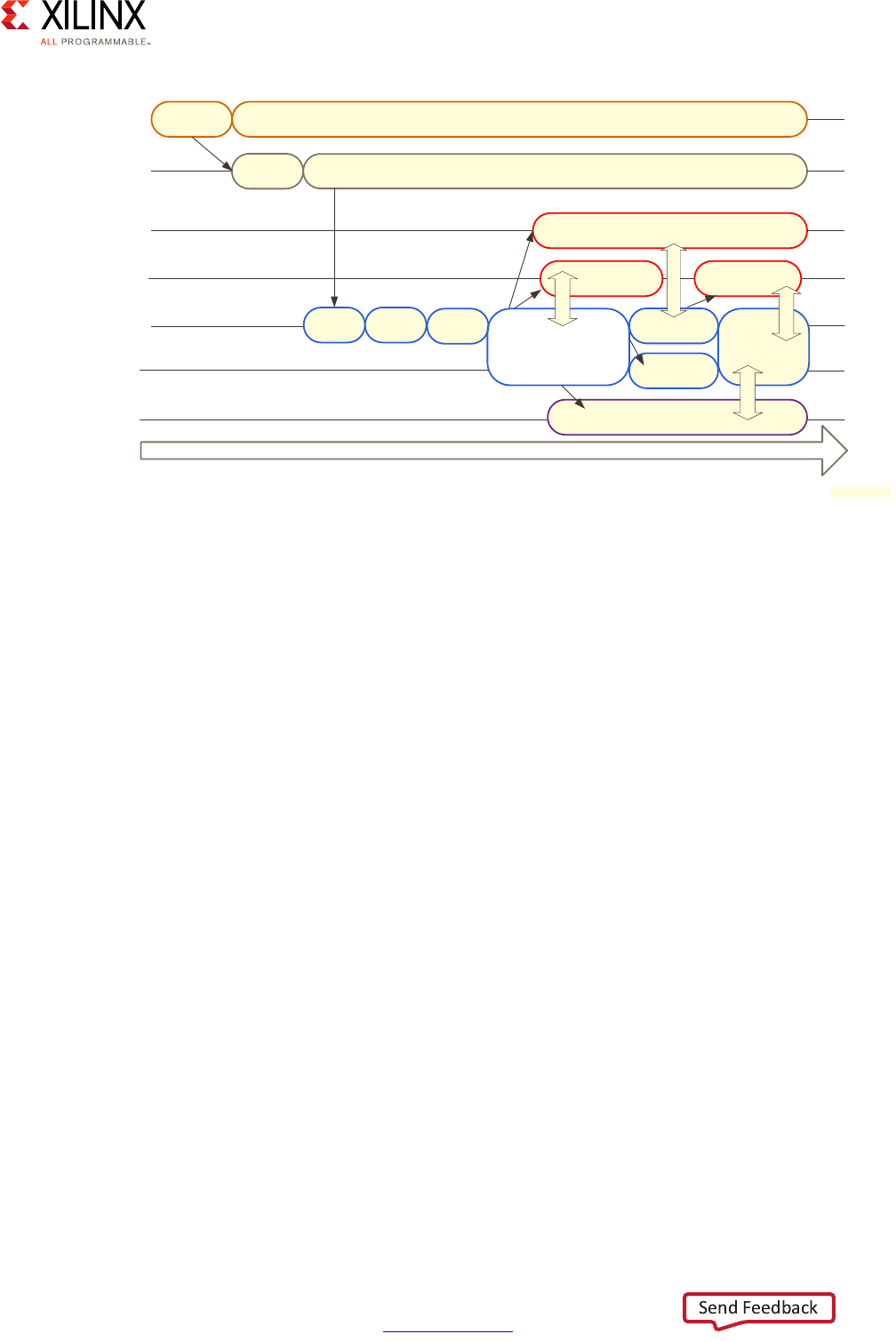

In this diagram, each line radiating out from the center point represents a functional

domain of the Zynq UltraScale+ MPSoC platform. The center point represents zero

requirements/need for that functional domain, while the end of each line represents a high

level of requirements/need/complexity. Taking this diagram as a starting point, a Systems

Architect, working with a FAE or Sales Engineer, can plot the degree to which a specific

functional domain applies to their design and link those points together to get a general

sense of the problem set they are trying to address.

Consider the following Advanced Driver Assistance System (ADAS) example:

X-Ref Target - Figure 1-2

Figure 1-2: Vector Methodology Diagram

Power

Processing System

Programmable Logic

Real-Time Processing

Security

Multimedia

Memory

Peripherals

System Software

Configurations

Resource Isolation

and Partitioning

X18044-032917

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 10

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 1: Introduction

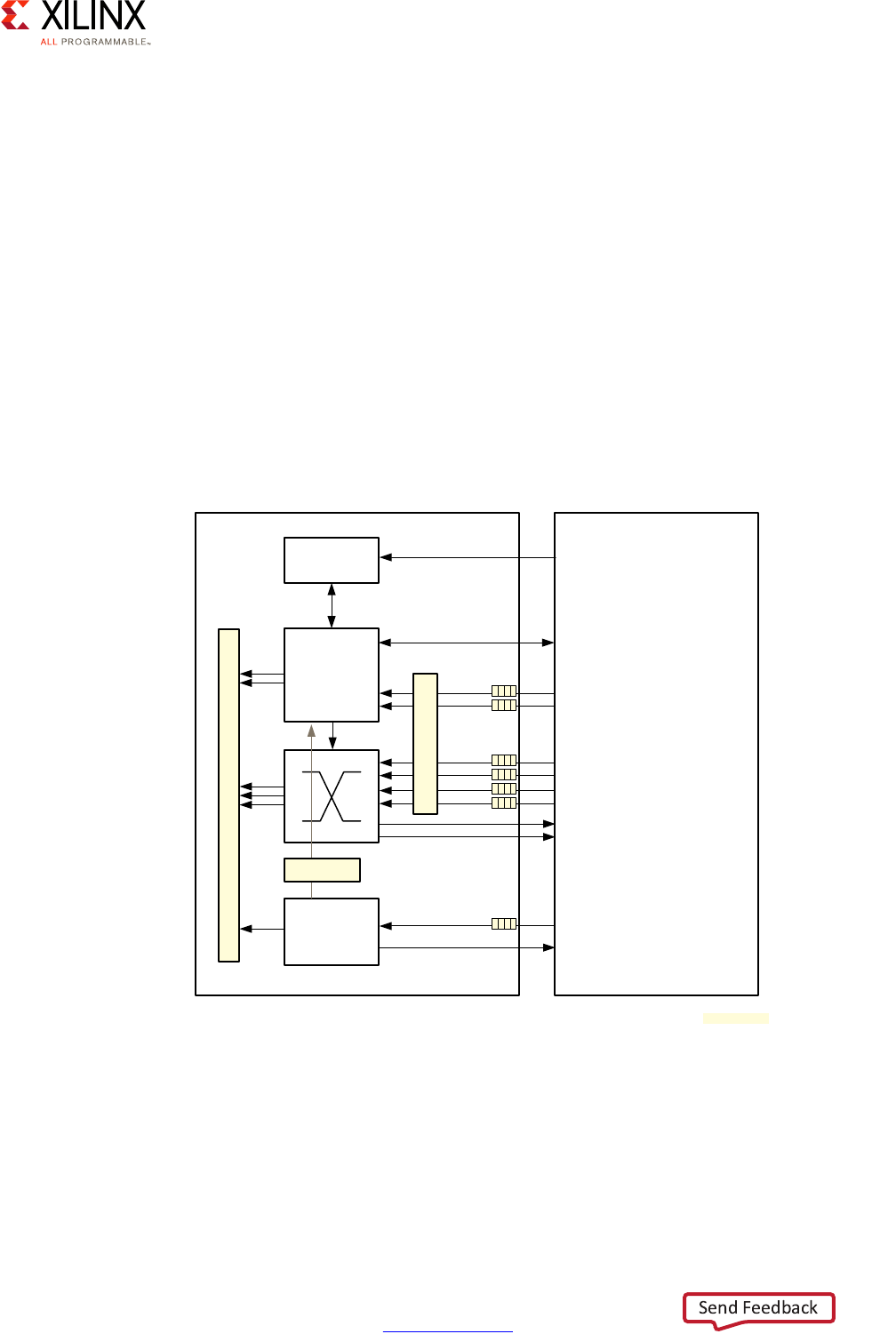

In this example, we can see that the system software configurations, real-time processing,

programmable logic, and processing system are all at the maximum value. This translates

into a likely greater emphasis on specific chapters within this guide that explain the parts of

the MPSoC that a designer needs to focus on, and their pros/cons and limitations for every

one of those four vectors. With average need for power, security, multimedia, peripherals,

and resource isolation and partitioning needs, you can then appropriately scale back the

efforts to use in these areas. This allows more freedom to focus efforts on vectors that are

more critical to the product. Therefore, use of this guide allows you to translate your level

of requirements into a set of design choices and vary the degree of effort and the resources

put into optimizing certain parts of their UltraScale+ MPSoC-based designs.

Note: Although the diagram is meant as flexible tool for gauging the relevance of this guide's

different chapters, that is NOT to say that any vectors should be skipped. Be sure to read the entire

methodology guide before you make final design decisions.

X-Ref Target - Figure 1-3

Figure 1-3: Vector Methodology Example: Advanced Driver Assistance System

Power

Processing System

Programmable Logic

m

P

r

og

ra

Real-Time Processing

Security

Multimedia

Memory

Peripherals

System Software

Configurations

Resource Isolation

and Partitioning

Advanced Driver Assistance System (ADAS) Design Example

y

ste

m

P

R

e

Multimedia

l

s

re

s

Re

so

an

d

P

rocessin

g

Sy

y

Securit

y

o

ur

ce

I

so

la

ti

on

d Partitioning

y

M

emo

ry

g

70

80

90

100

60

50

40

30

20

10

0

X18043-032917

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 11

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 1: Introduction

Accessing Documentation and Training

Access to the right information at the right time is critical for timely design closure and

overall design success. Reference guides, user guides, tutorials, and videos get you up to

speed as quickly as possible with Xilinx tools. This section lists some of the sources for

documentation and training.

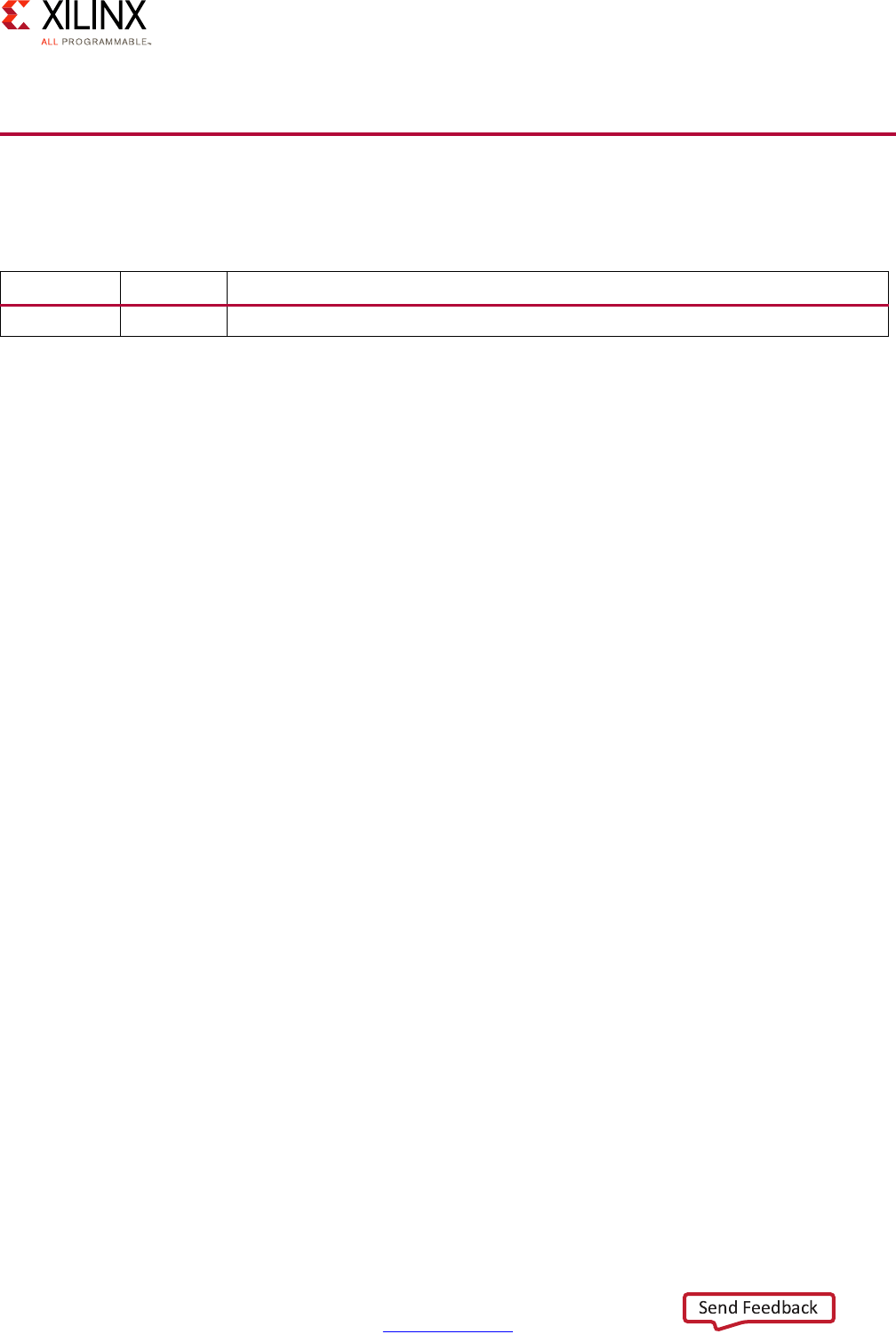

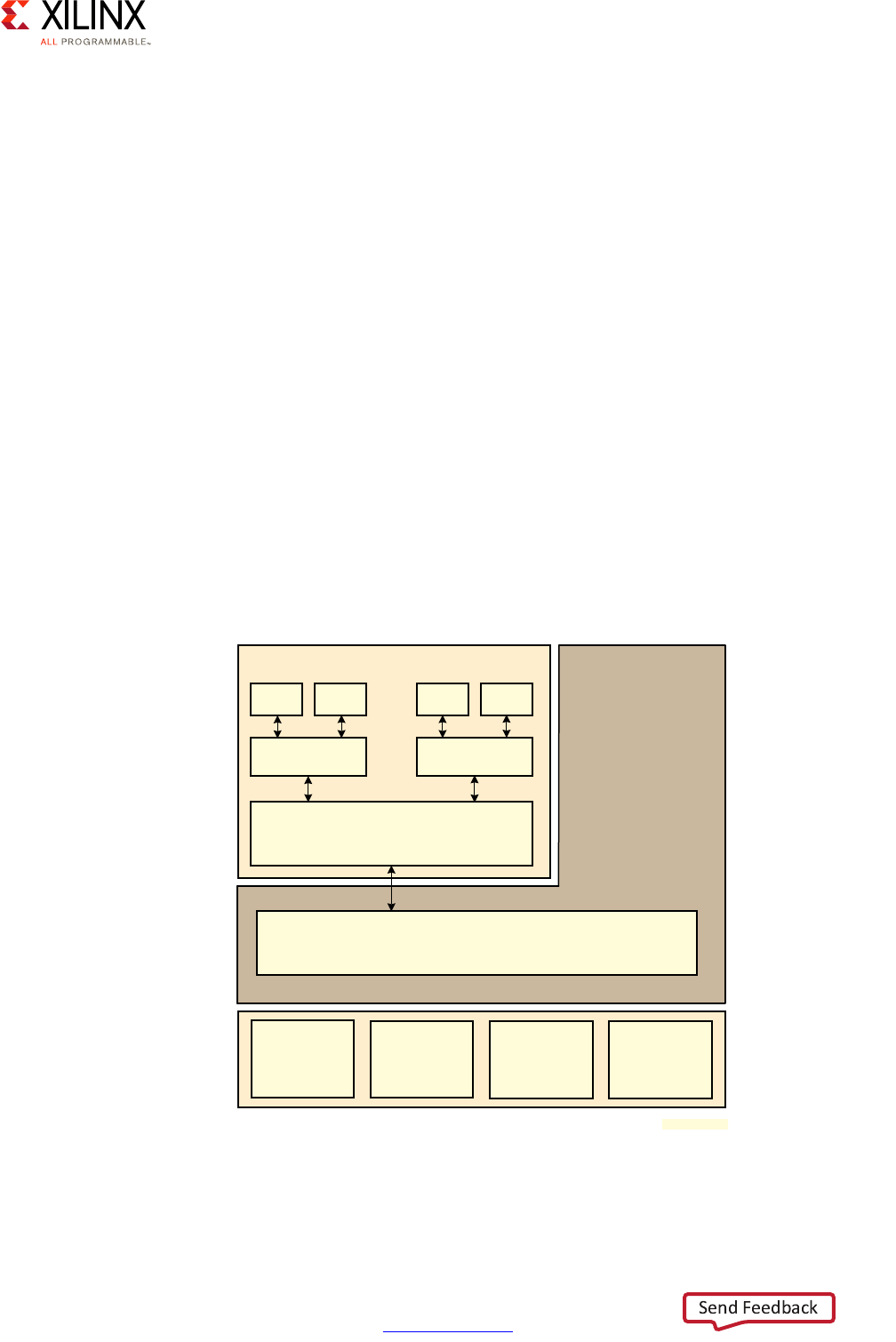

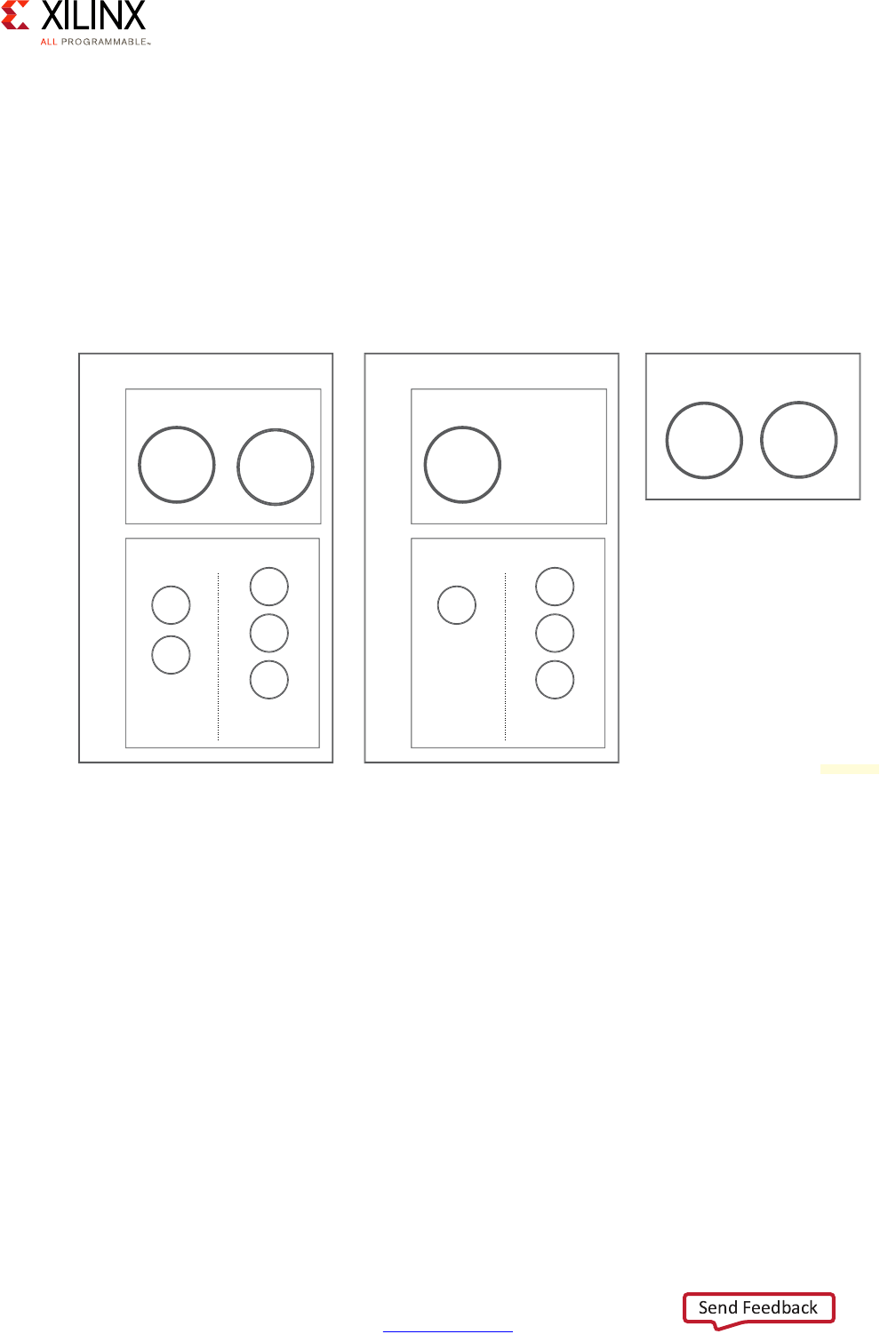

Using the Documentation Navigator

The Xilinx Documentation Navigator ships as part of the Xilinx tools. It provides an

environment to access and manage the entire set of Xilinx software and hardware

documentation, training, and support materials. Documentation Navigator allows you to

view current and past Xilinx documentation. The documentation display can be filtered

based on release, document type, or design task. When coupled with a search capability,

you can quickly find the right information.

Documentation Navigator scans the Xilinx website to detect and provide documentation

updates. The Update Catalog feature alerts you to available updates, and gives details

about the documents that are involved. Xilinx recommends that you always update the

catalog when alerted to keep it current. You can establish and manage local documentation

catalogs with specified documents.

The Documentation Navigator has a tab called the Design Hub View. Design hubs are

collections of documentation related by design activity, such as Zynq UltraScale+ MPSoC

Design Overview, PetaLinux Tools, and the Xilinx Software Development Kit (SDK).

Documents and videos are organized in each hub in order to simplify the learning curve for

that area. Each hub contains an Embedded Processor Design section, a Design Resources

section, and a list of support resources. For new users, the Embedded Processor Design

section (shown in Figure 1-4) provides a good place to start.

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 13

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2

Processing System

The Zynq® UltraScale+™ MPSoC device gives system designers considerable power and

flexibility in the way the on-board processing capabilities can be used. In addition to the

configurability of the application processing unit (APU) and the real-time processing unit

(RPU), the Zynq UltraScale+ MPSoC device provides several dedicated processing blocks for

taking care of different types of tasks. This chapter covers the processing system

capabilities of the Zynq UltraScale+ MPSoC device, their interconnection and the

recommendations for their use.

Defining Your Processing Needs

Modern-day embedded designs often combine a complex mix of workloads, constraints

and external dependencies. Finding the best fit between each of your application's

processing needs within the many processing blocks of the Zynq UltraScale+ MPSoC device

is crucial to ensuring overall product success. The next section will start introducing you to

the core concepts behind each of the processing blocks of the Zynq UltraScale+ MPSoC

device while each block and important component will be discussed in greater detail later

in this chapter.

Meanwhile, you can start thinking about the following questions with regards to your

design:

1. What are the critical needs for your application? For each part:

a. Is it continuously processing time-sensitive data?

b. Is it real-time or mission critical?

c. Does it relate to multimedia? Or, rather, is it a general-purpose computing workload?

d. Does it require acceleration beyond the processing system's performance?

2. What type of interaction is required between system components? For each set of

components:

a. Do they need to exchange data?

b. If so, how much and at what frequency?

c. Do they need to notify each other of key events?

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 14

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

3. What type of interaction is required with the external world? For each part:

a. What kind of memory needs does it have?

b. Is it triggered by or does it respond to interrupts?

c. Does it need to utilize peripheral I/O?

4. Which components in your design are power sensitive?

Processing System Methodology

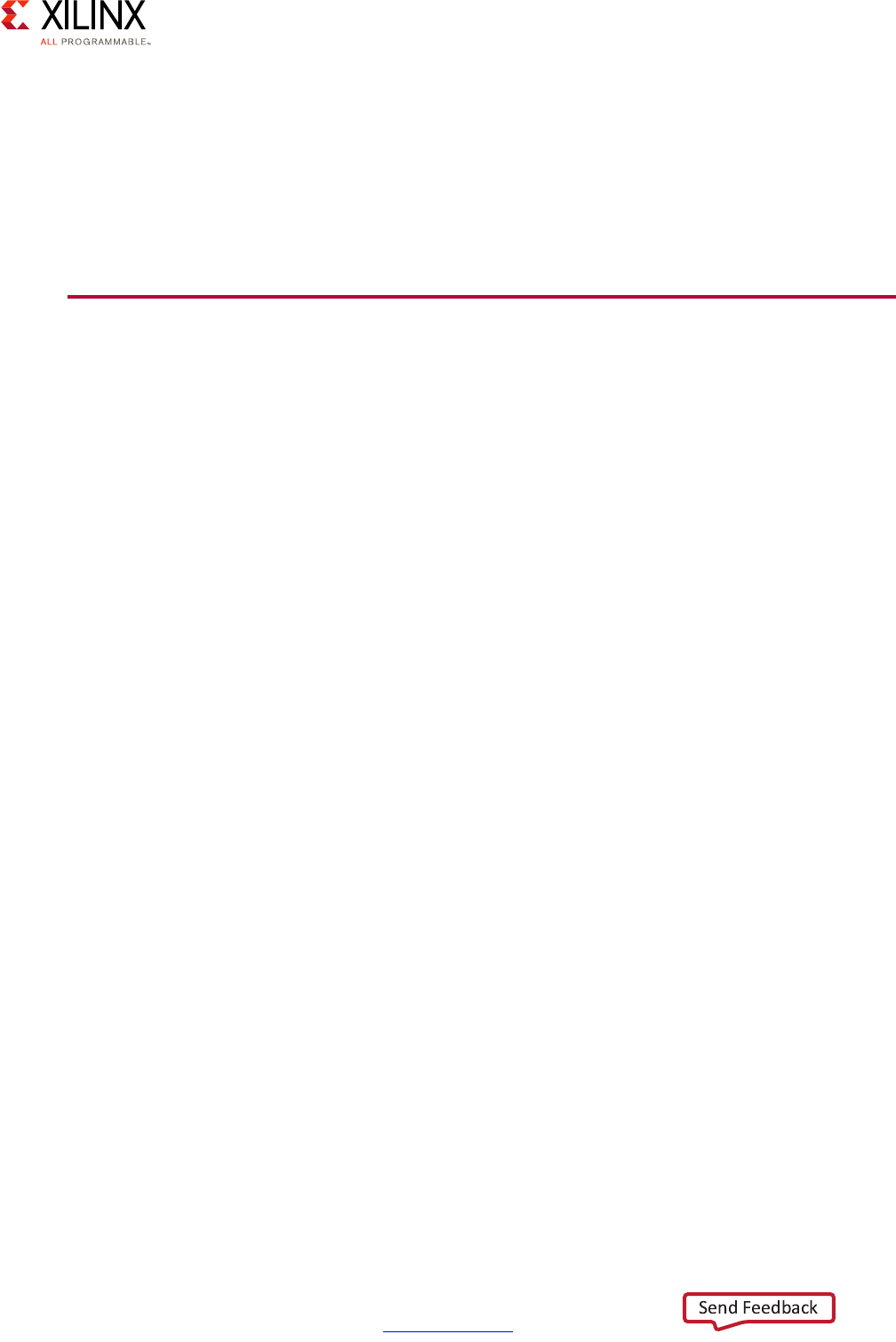

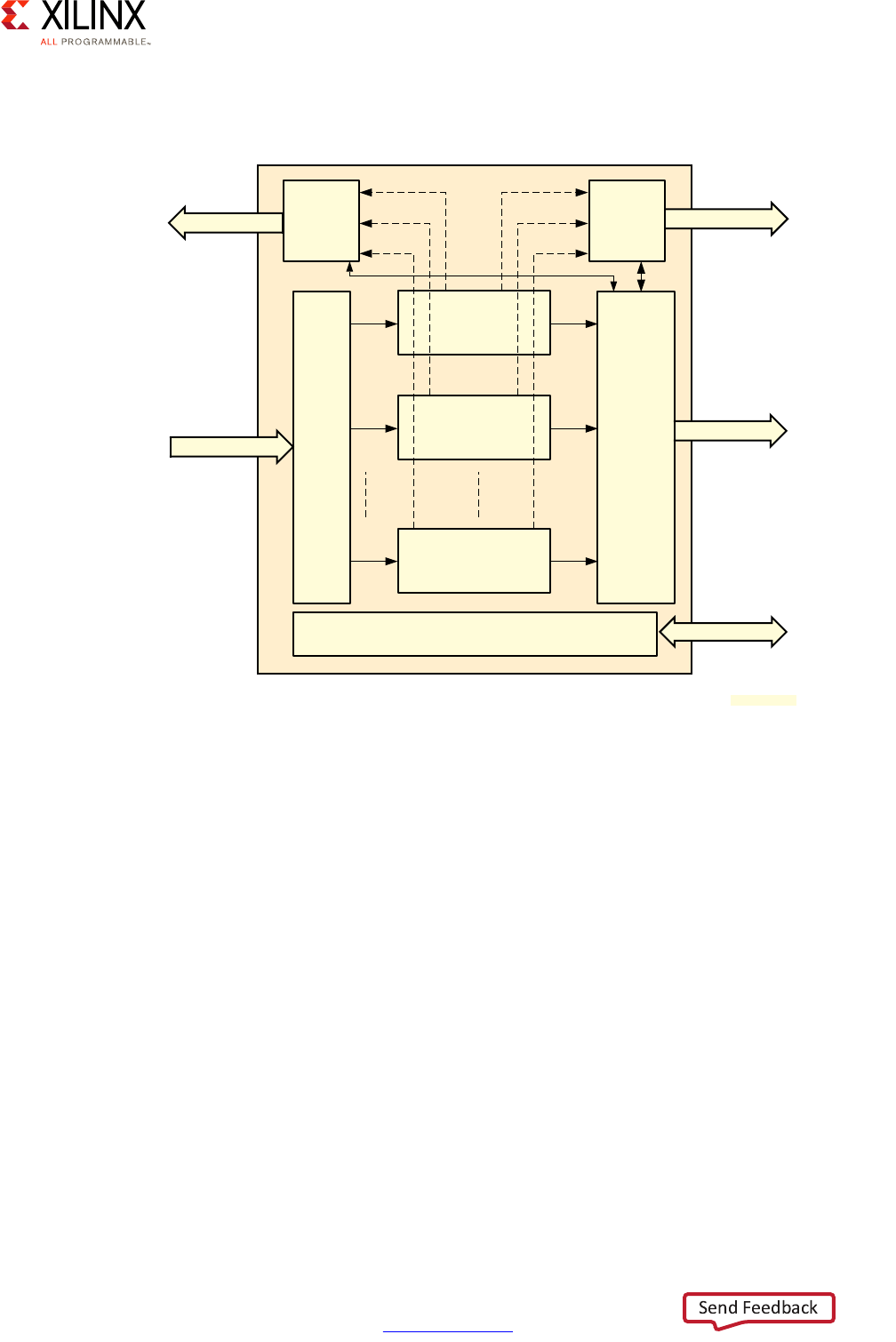

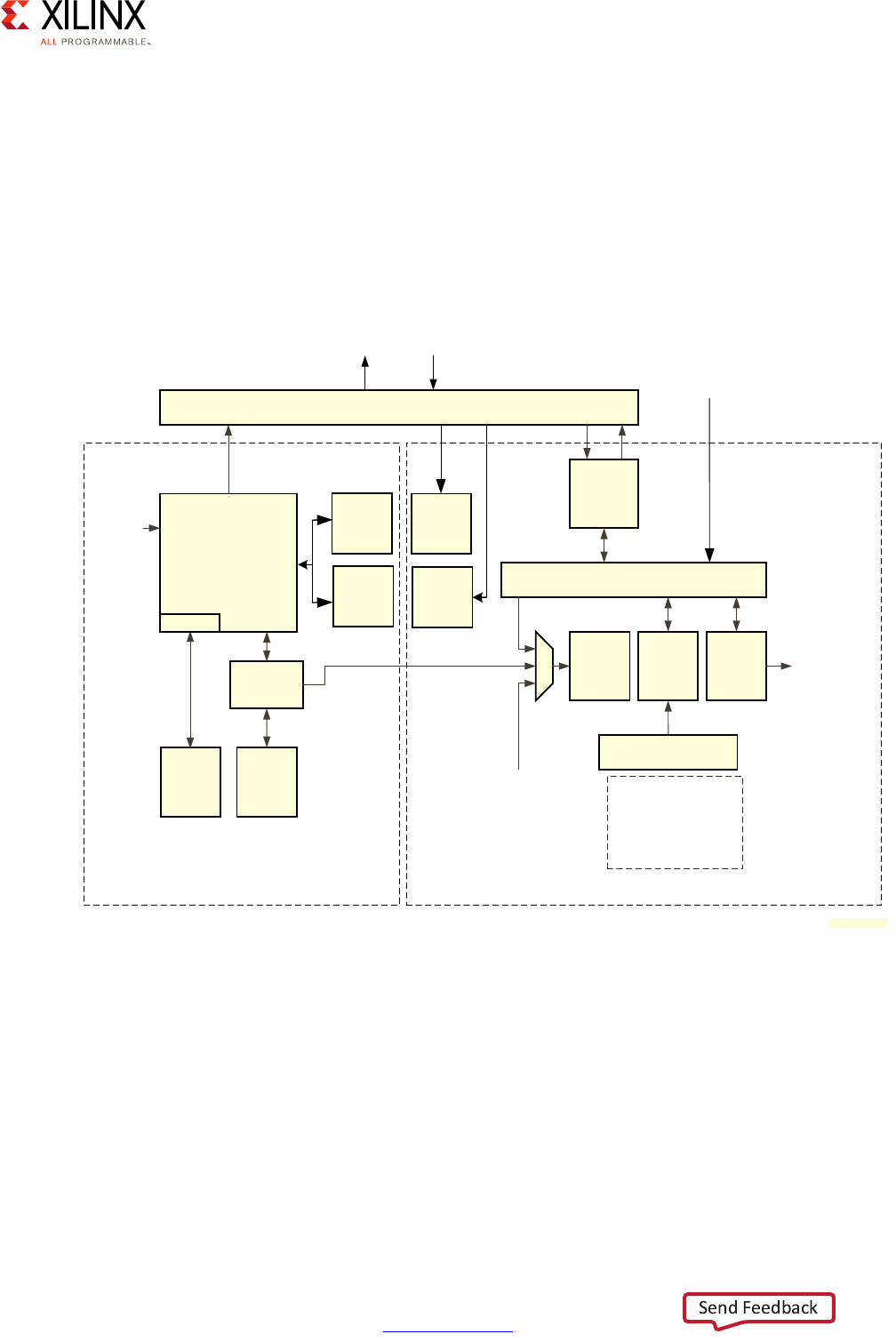

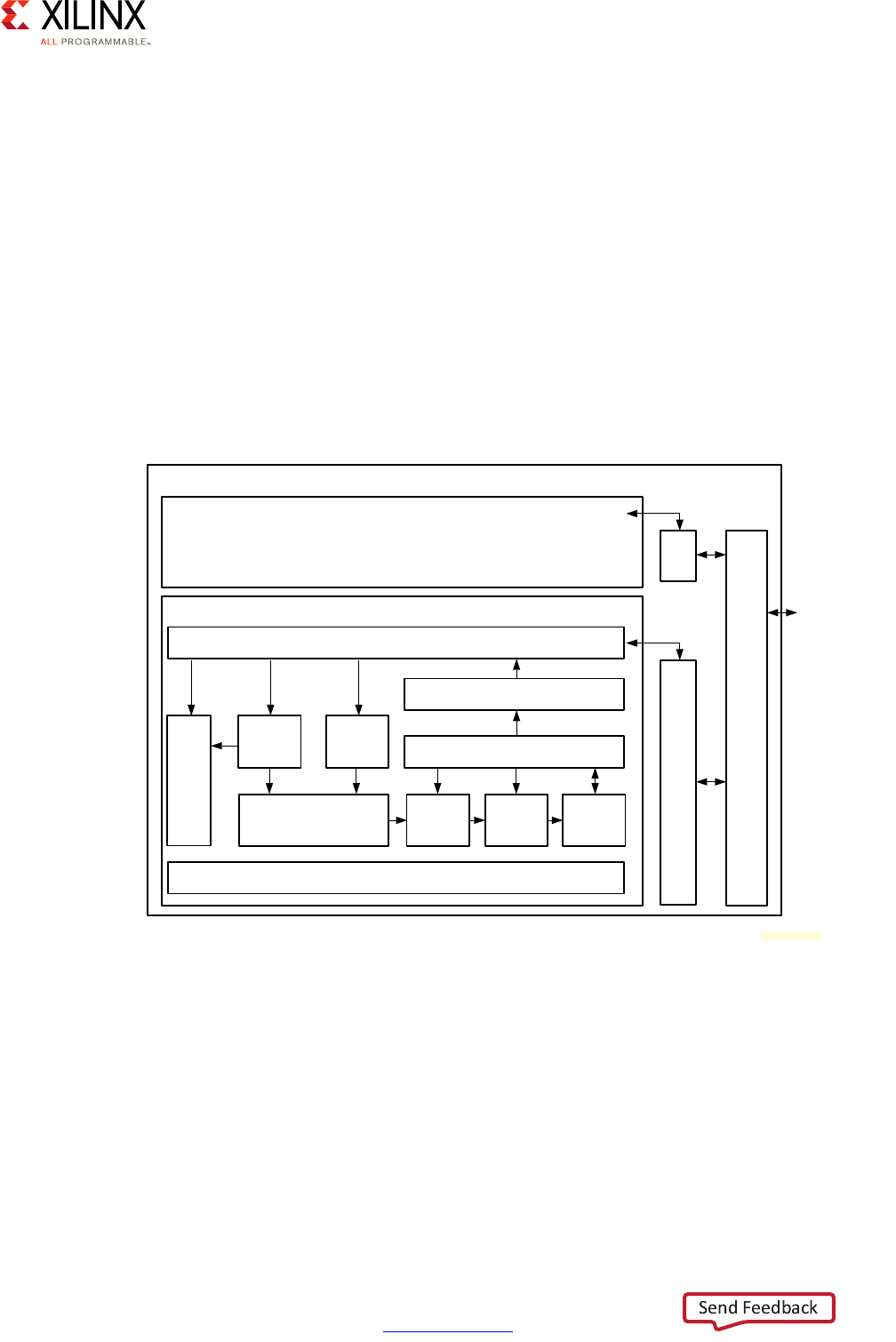

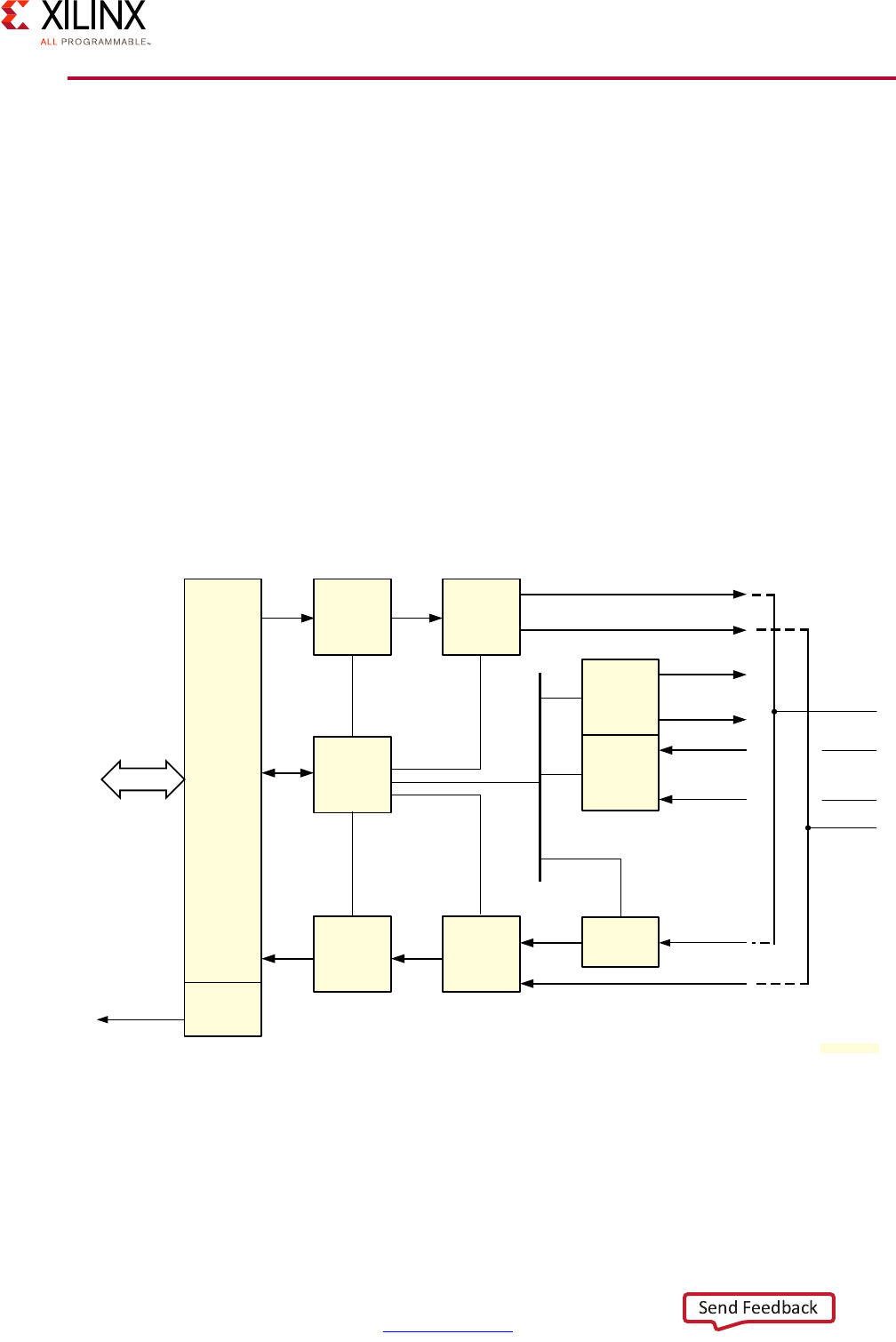

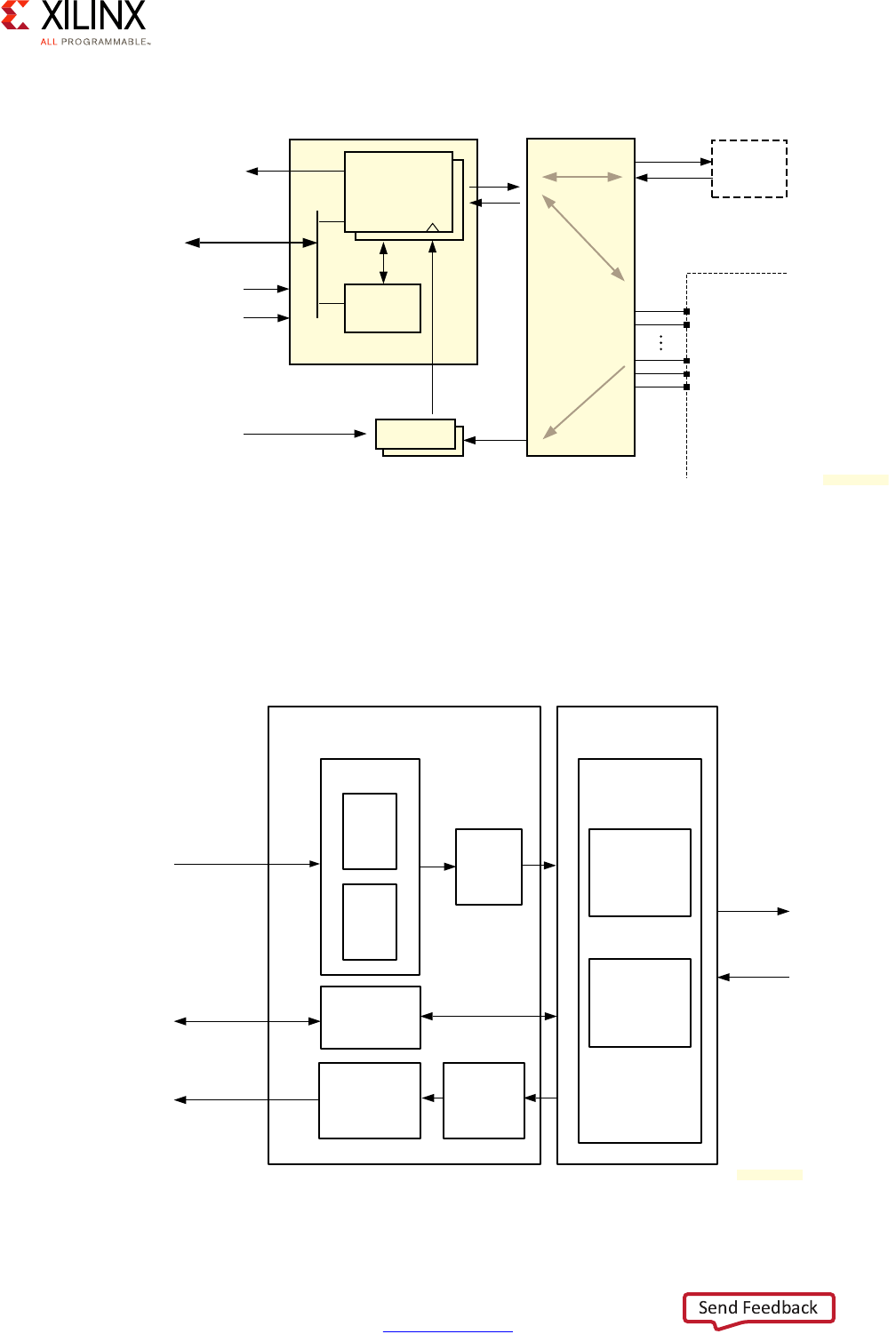

Given the flexibility of the Zynq UltraScale+ MPSoC device, care must be taken in

thoroughly analyzing its processing capabilities before mapping your design to any given

part. To that end, the following diagram provides a simplified view of the full system

diagram presented in this guide's introduction that highlights the main processing blocks of

the Zynq UltraScale+ MPSoC device and their interconnection through the interconnect,

with the blocks capable of conducting some form of customizable processing highlighted in

green.

Note: Figure 2-1 does NOT attempt to precisely represent the internal blocks of the Zynq

UltraScale+ MPSoC device. Instead, it is primarily a conceptual view for the purposes of the present

explanation.

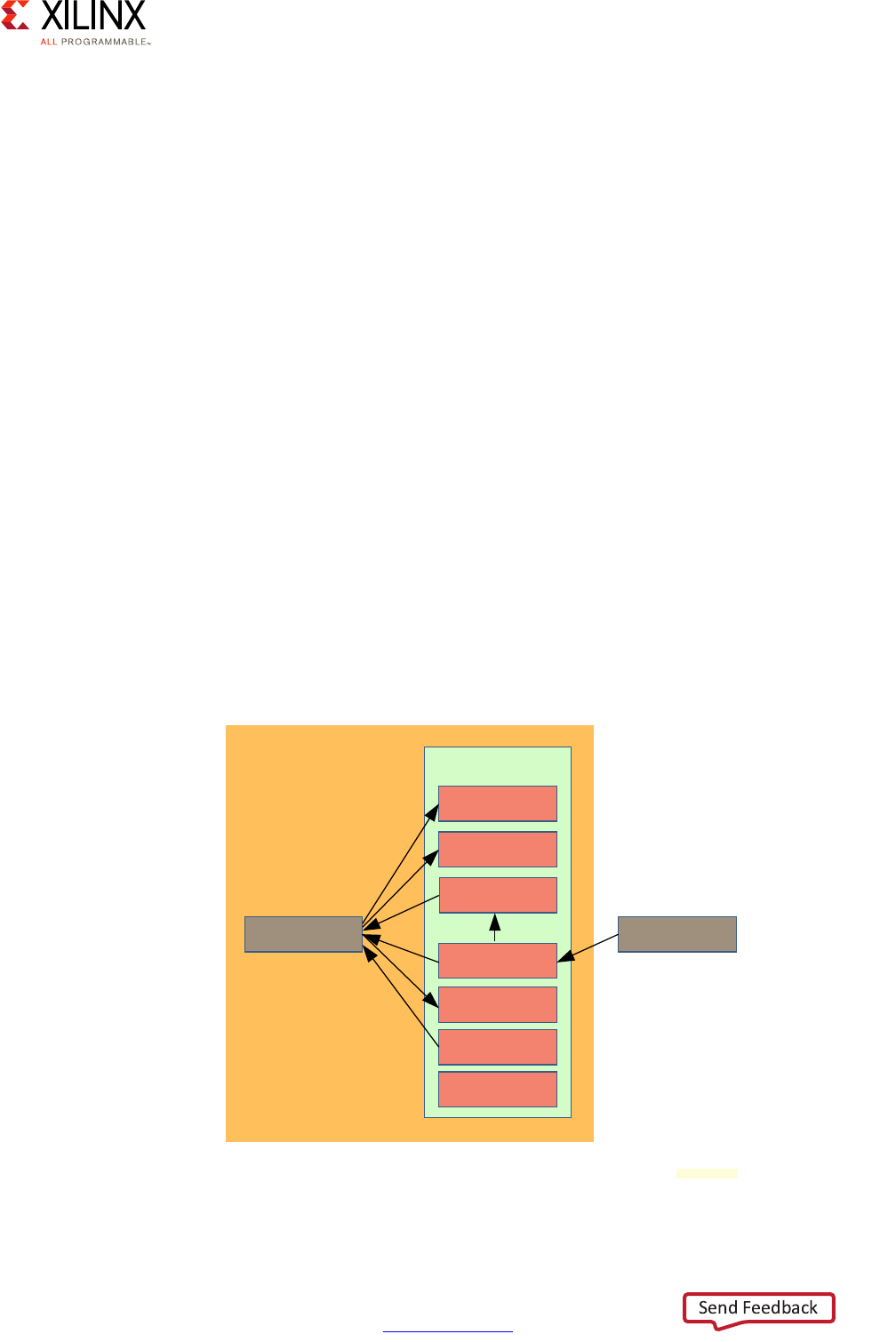

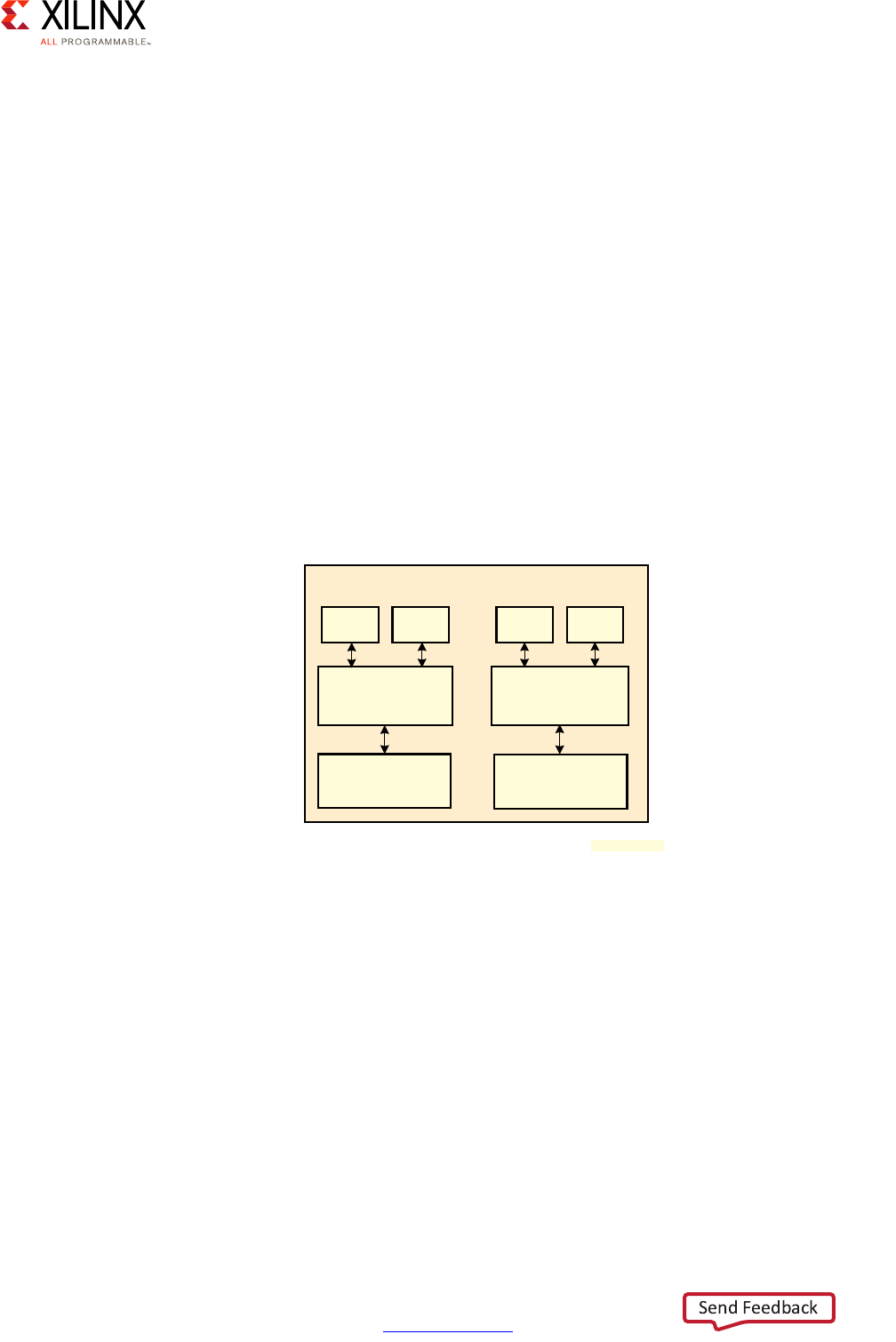

X-Ref Target - Figure 2-1

Figure 2-1: Overall Processing-Capable Blocks

RPU PMU LPD

Peripherals

Inter-Processor

Communication

Framework

(OpenAMP)

APU GPU*

CSU

PL

FPD

Peripherals

Full Power Domain

Low Power Domain

Programmable Logic Power Domain

PL

Peripherals Accelerators

Accele

r

a

t

o

r

s

* GPU support is OS-specific

X18660-032917

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 15

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

In the following sections we will cover the various highlighted blocks in detail along with

their related interrupt capabilities, the interconnect, and the main inter processor

communication mechanism of the Zynq UltraScale+ MPSoC device. There interconnect is

made up of several different types of blocks, for instance, each warranting its own separate

discussion. For the purposes of the present explanation, we will focus on the high-level

capabilities of each part of the system.

Looking at any given type of processing need found in your design, what you can control -

as hinted to by the questions in the previous section - is:

• Processing location

• Interconnect between processing locations

• Interaction with external world:

°Memory

°Interrupts

°Peripheral I/O

In terms of processing location, there are typical candidate blocks for certain types of

workloads:

These are general guidelines and your design might call for a different approach. Another

way to help you decide on where to locate a certain processing load is to follow this

decision tree:

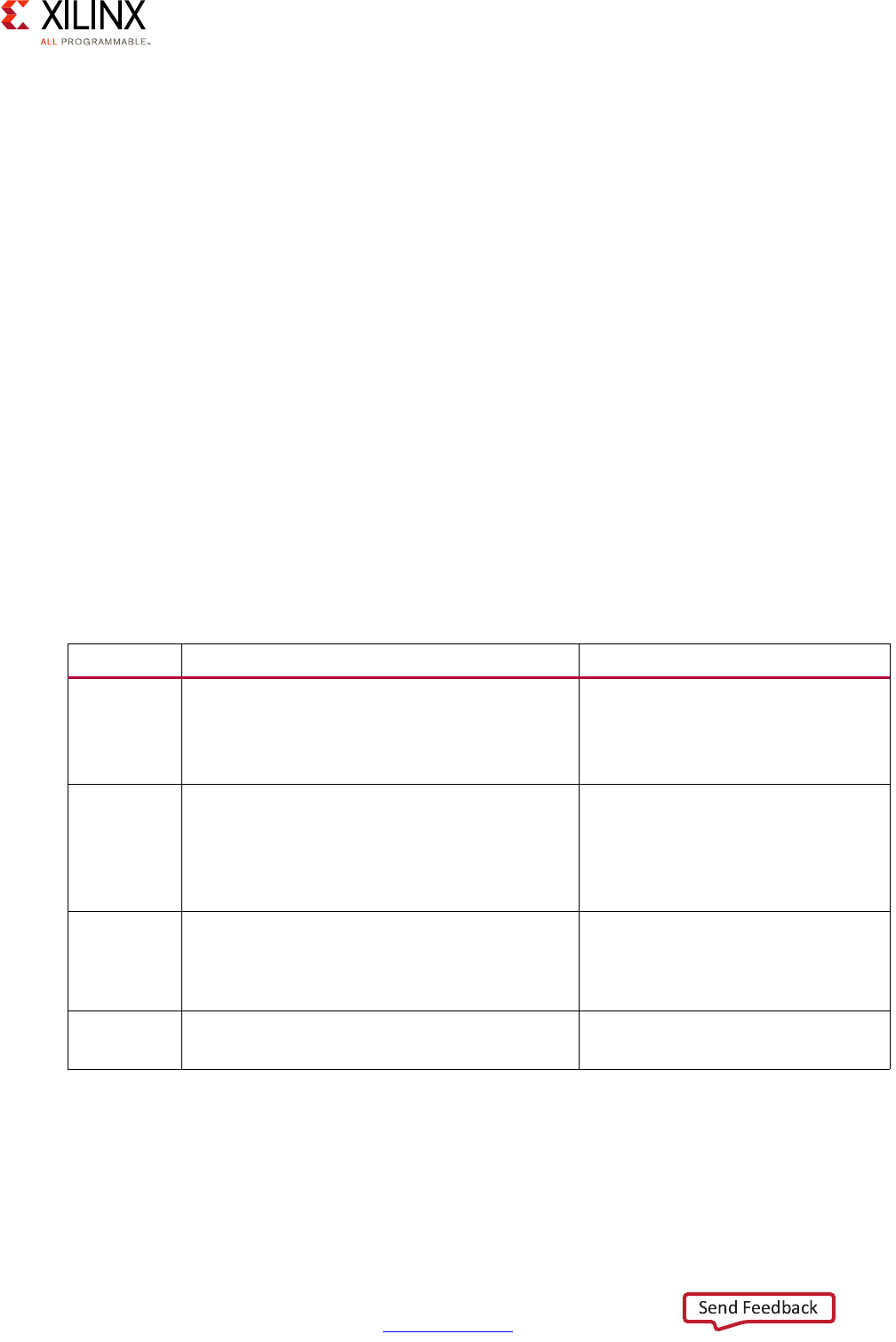

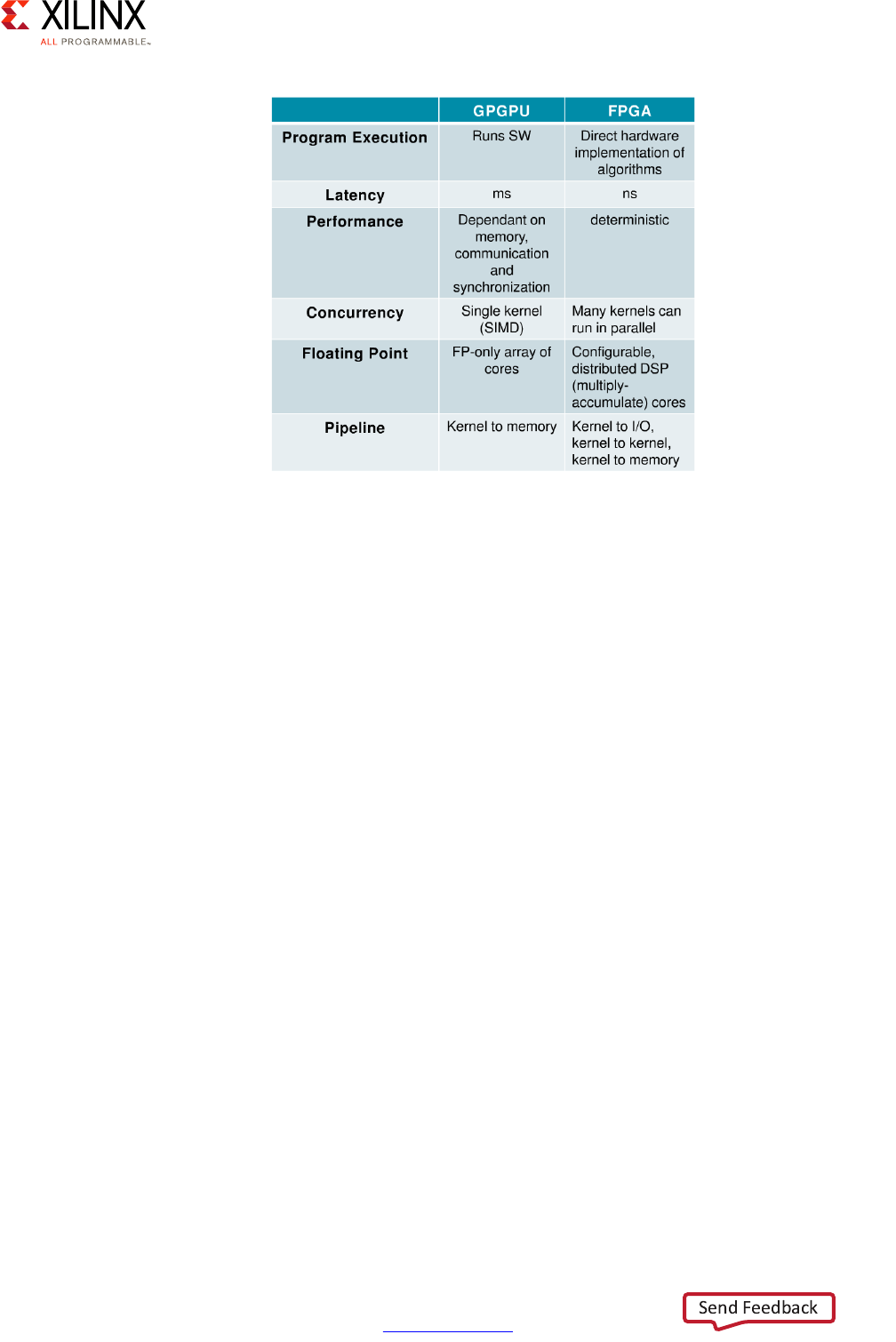

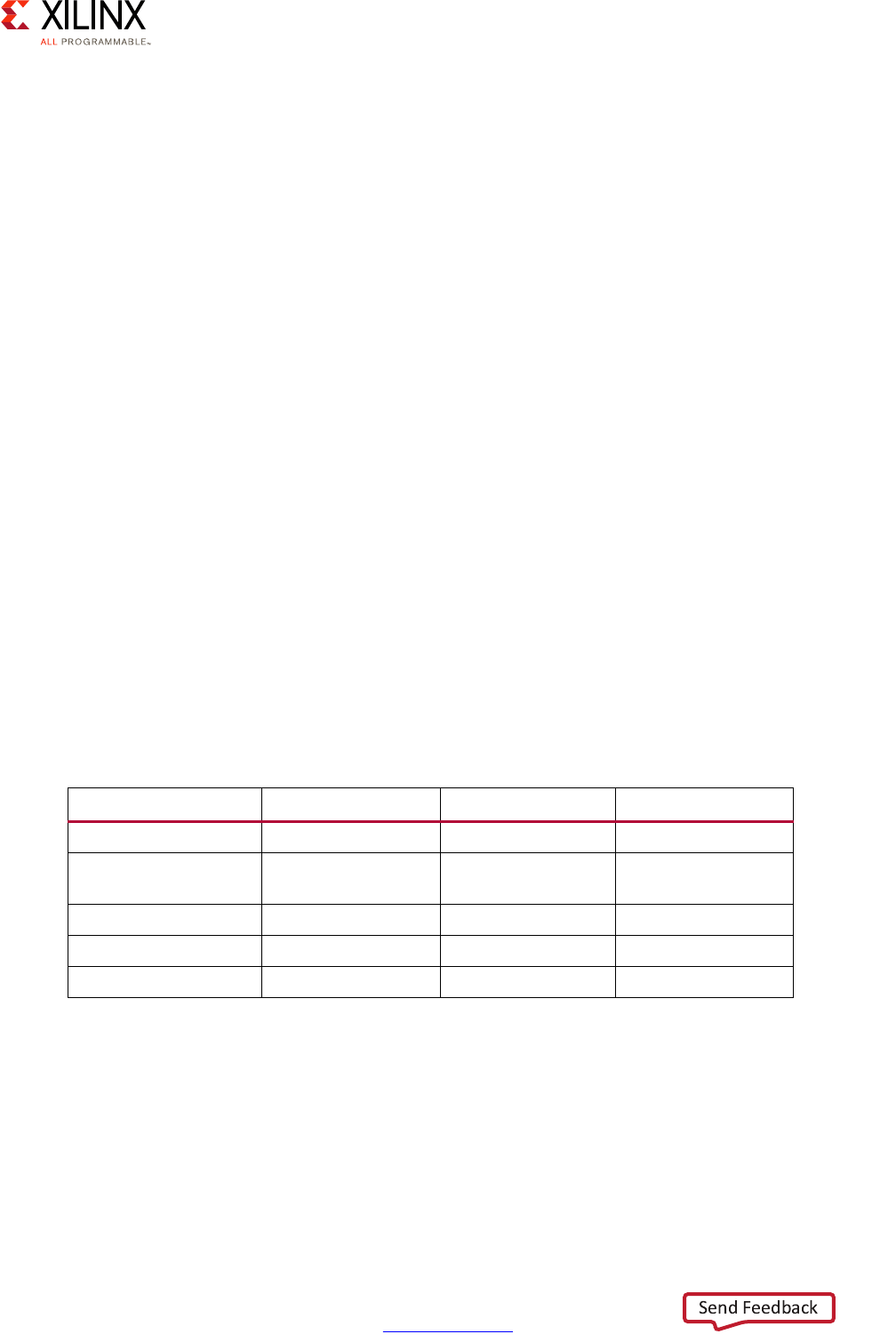

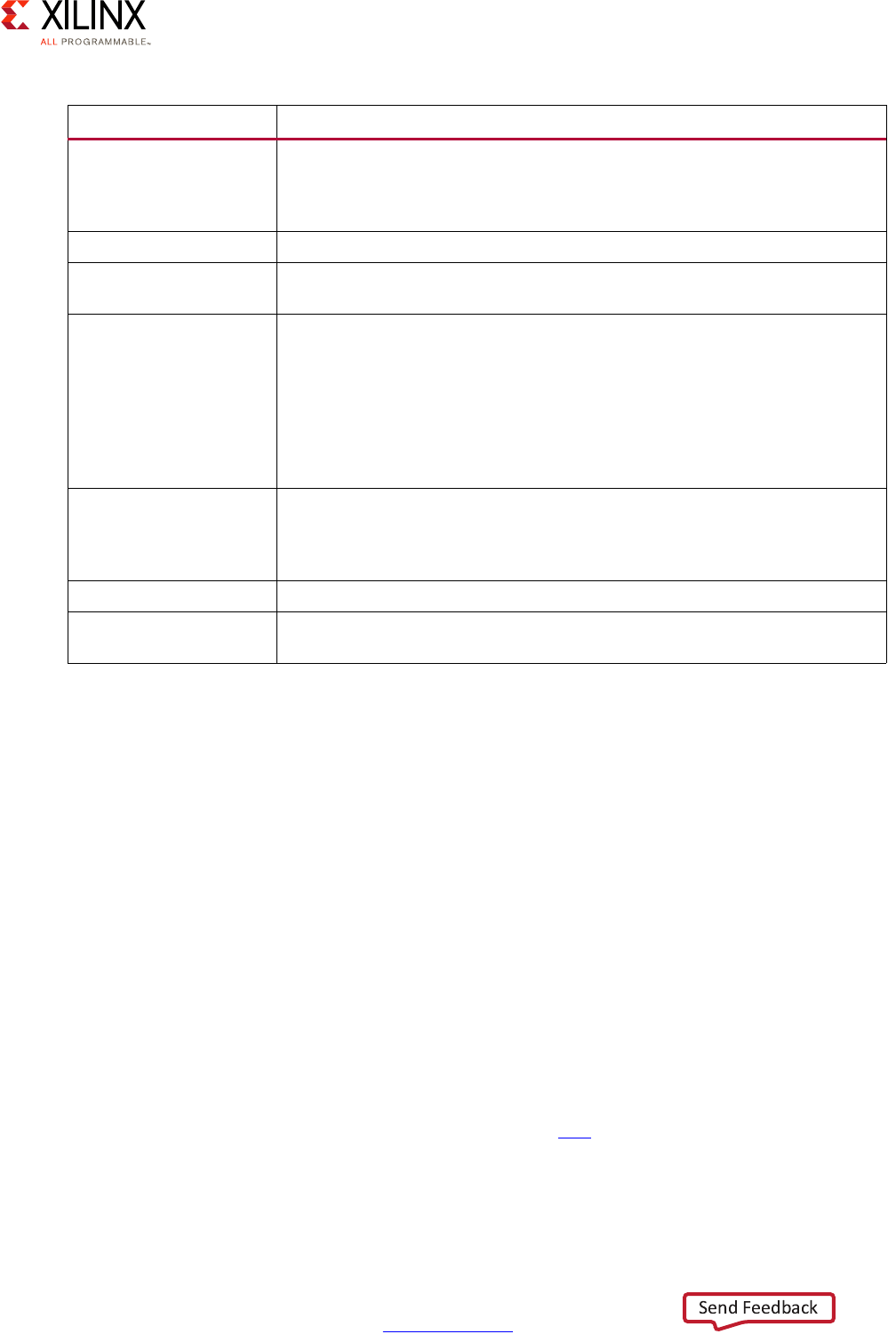

Table 2-1: Workload Candidates by Processing Block

Block Optimized For: Ideal For:

APU • High-level OS support such as Linux

• Hypervisor-based computing

• Symmetric Multi-Processing and Supervised

Asymmetric Multi-Processing

•HMI/UX

• Business logic

• Network/Cloud interaction

RPU • Deterministic Operation

• Low-Latency Memory Accesses

• Deterministic response time

software

• Safety-critical software

• Standards-compliant software

stack (ex: radio)

PL • Accelerating Applications • Acceleration / Parallelization

• Hardware-assisted effects,

transformations, filtering,

processing, encoding/decoding …

GPU • 2D and 3D Graphics Acceleration under Linux •Display

•Multimedia

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 16

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

The answers to the questions in the above diagram for each of your system's components

should be fairly straight forward. If you have continuous data streams or requests that need

to be constantly processed within certain time limits, chances are the programmable logic

(PL) is the best location to put the majority of your system's components involved in

processing those data streams or requests. If a workload doesn't fit that description but still

needs to respond deterministically (i.e. in real-time) to external events or if it's mission

critical then the RPU is probably a very good candidate. If it still doesn't fit that description

then it's probably a general-purpose computing problem that should either be taken care of

by the graphics processing unit (GPU) in case of graphics or the APU for everything else.

Still, even if on a first pass you determine that certain pieces of software should be handled

by the RPU or the APU, there might be further optimization opportunities for moving those

to the PL. If, for instance, the functionality to be achieved can be described as a fixed list of

mathematical equations, such as an FFT, and/or a known set of states or state machines,

especially if they can be run in parallel, it's probably a good candidate for embedding as

part of the PL.

One way to gauge whether moving certain functionality into the PL is beneficial is obviously

manual testing and prototyping. Xilinx, however, provides you with an even more effective

way of identifying and handling optimization candidates for the PL. Indeed, the SDSoC™

and Xilinx® SDK development tools can profile your application code and, in the case of

SDSoC, enable you to offload code sections to the PL for performance testing at the click of

a button. SDSoC will automatically compile the necessary logic into the PL, assign the

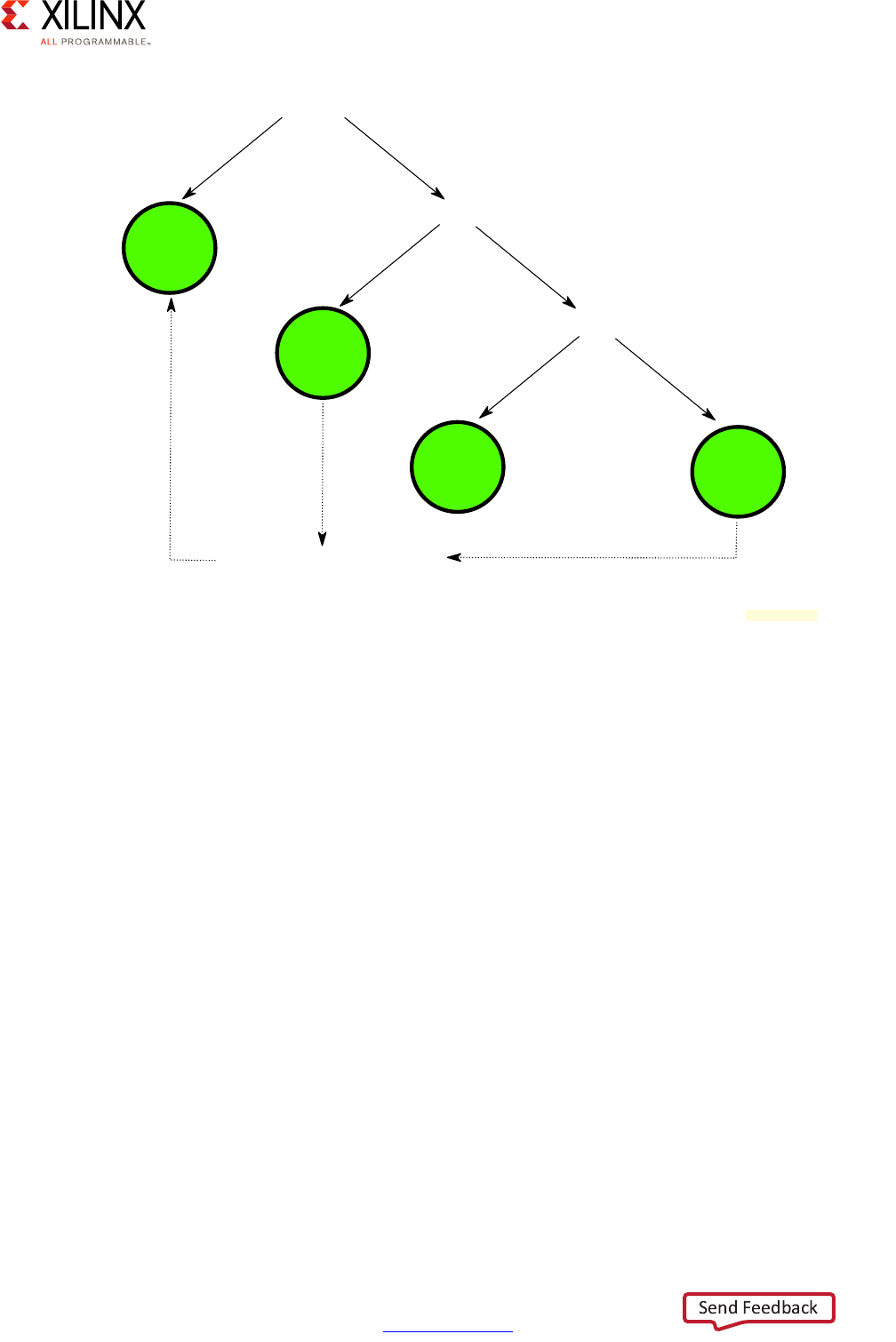

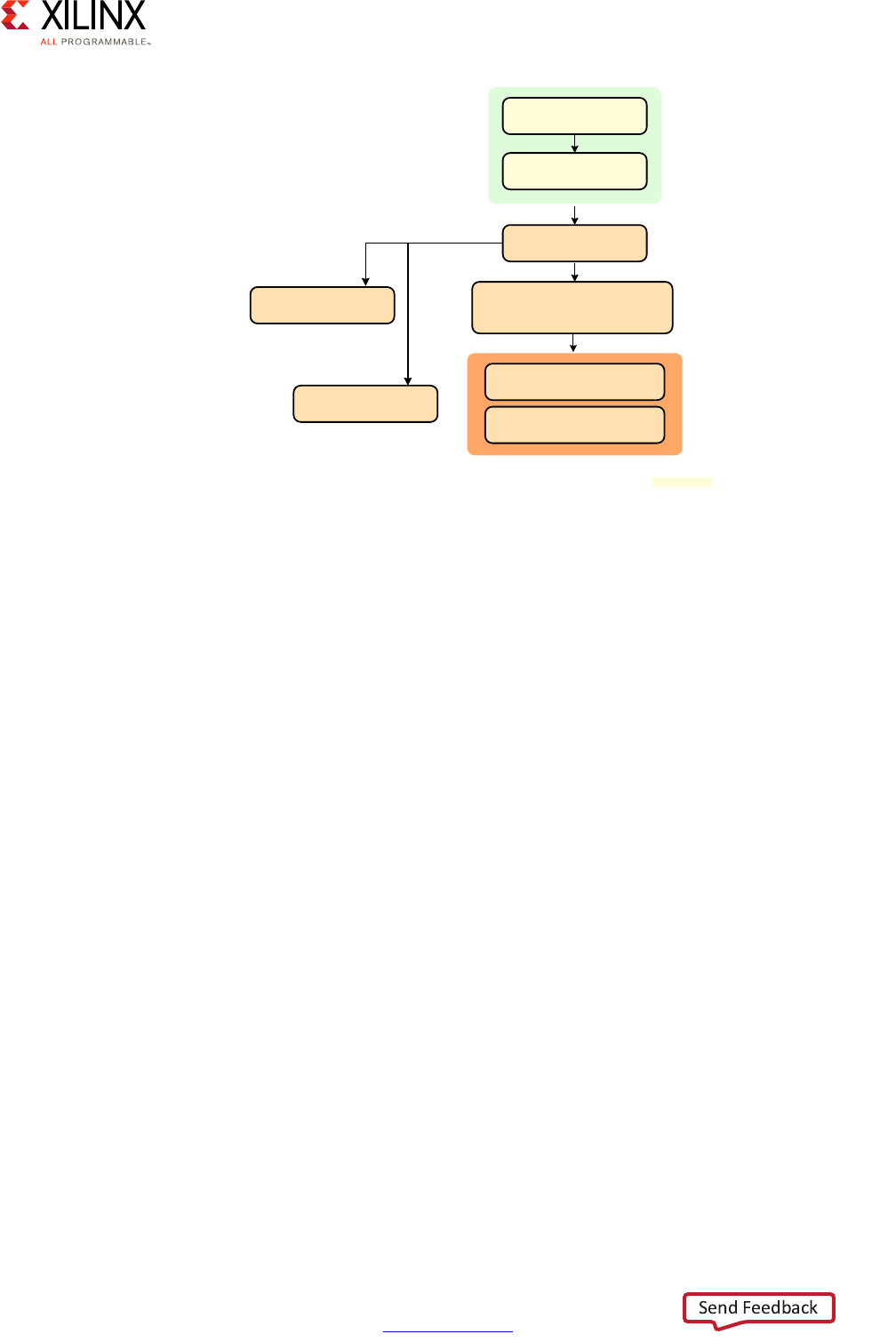

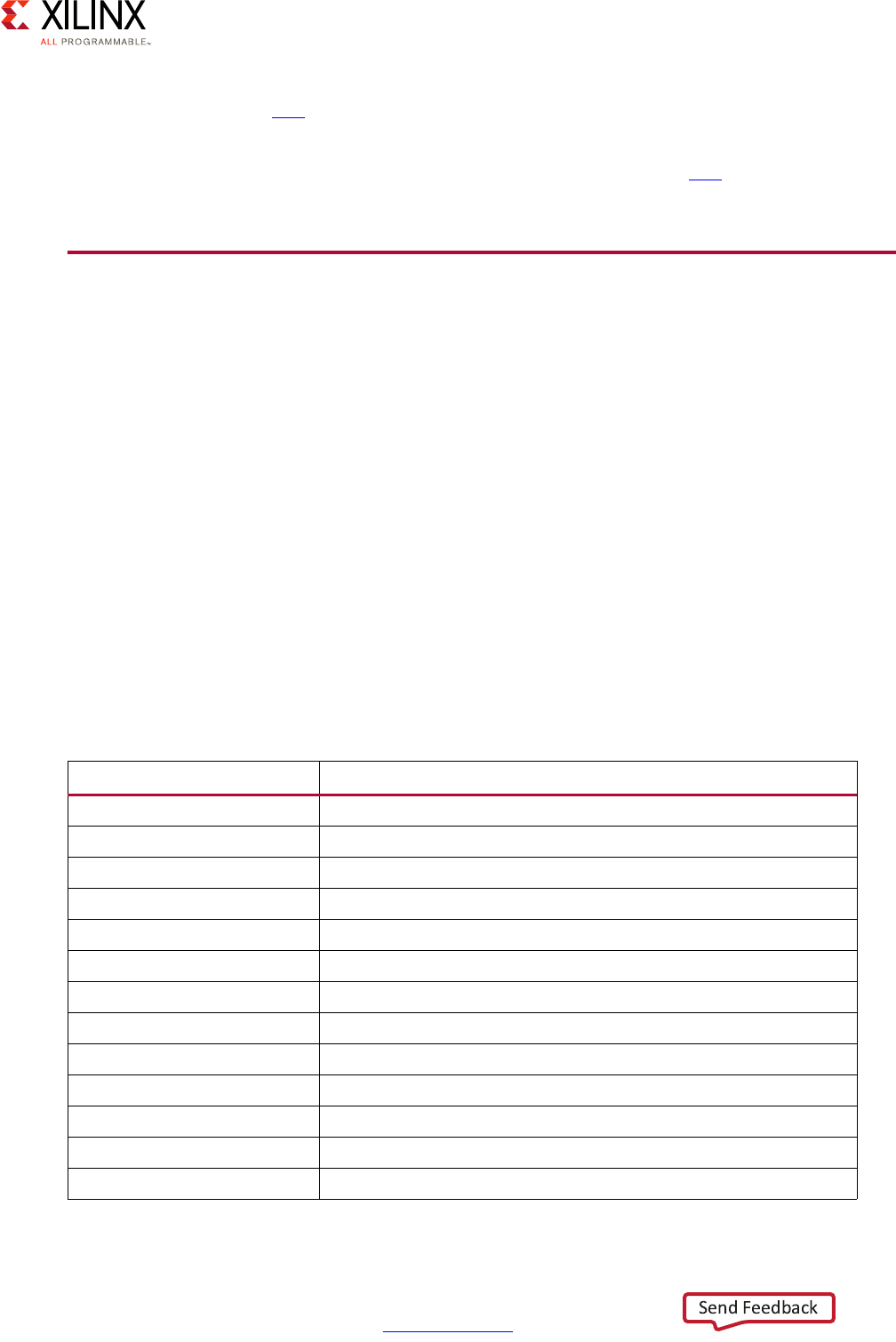

X-Ref Target - Figure 2-2

Figure 2-2: Processing Location Decision Tree

Is it continuously processing time-sensitive data?

PL

Yes No

Is it real-time or mission critical?

Yes No

RPU

Is it multimedia?

Yes No

GPU* APU

Can it be optimized to PL?

Yes

* GPU support is OS-specific

X18706-032117

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 17

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

necessary data movers and software drivers to enable the rest of your APU- or RPU-bound

software to transparently use the accelerated software portions. SDSoC therefore helps

streamline the software acceleration process by greatly simplifying all steps involved. The

use of SDSoC vs. manual offloading is therefore a trade off between ease of implementation

and hand-crafted performance tuning.

An additional aspect to keep in mind is the top clock speeds of the processing blocks:

• APU – Up to 1.5 GHz

• RPU – Up to 600 MHz

• GPU – Up to 667 MHz

Note: Keep in mind that those are top speeds. While each block can run at a maximum at those

speeds, it's very unlikely to be running at those speeds all the time nor will it necessarily make sense

for your design.

With its ARM® Cortex®-A53 processors, the APU is the fastest general purpose computing

resource on the Zynq UltraScale+ MPSoC device. At first glance it might therefore seem to

be the best candidate for workloads requiring maximum computing power, especially since

you can have up to four Cortex-A53 processors on the Zynq UltraScale+ MPSoC device.

Maximum frequency however does not necessarily mean best fit for function. The APU's

Cortex-A53 processors, for instance, are not as well suited to real-time workloads as the

RPU's ARM® Cortex®-R5 processor. Among many other factors, there's therefore a trade

off between performance and determinism in choosing between the APU and the RPU.

Once the most likely candidate blocks for housing a given functionality have been

identified, you still need to identify the best way to move data between blocks through the

interconnect and how each processing location interacts with the various processing

resources internal to the system as well as interfaces and resources within the outside

world. The interconnect and interrupt processing are discussed in detail later in this chapter.

For all aspects related to peripheral I/O, refer to Chapter 10, Peripherals. For information

regarding the Memory, refer to Chapter 6, Memory. For more information regarding the

PL's capabilities, including its built-in accelerators, refer to Chapter 5, Programmable Logic.

Note that while the present guidelines might prescribe a given recommended processing

block, it's entirely possible that after reviewing the entire set of content related to a given

part of your design that an alternate, better-suited configuration might become evident to

best fit your specific product needs. The decision tree presented earlier, for example,

recommended using the RPU for your real-time software. Your design might, instead, call

for running a real-time operating system (RTOS) on the APU with the Cortex-R5 processors

being run bare-metal. Another example is network communications. The above

recommendations categorize network communication as being best slated for the APU. Yet,

the PL contains integrated blocks for 100G Ethernet and PCIe which, together, can be used

to efficiently accomplish network-related tasks that would typically be designated for the

APU. The Xilinx White Paper Unleash the Unparalleled Power and Flexibility of Zynq

UltraScale+ MPSoCs (WP470) [Ref 10] describes the flexibility of the Zynq UltraScale+

MPSoC outlines such an example use-case for a data center application. It also covers two

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 18

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

more example uses-cases, namely a central Advanced Driver Assistance System (ADAS)

module and software-defined radio (SDR), which are likely to help you get a better

understanding of how to partition your design's processing.

Yet another important aspect to keep in mind when determining where to conduct any

given part of your design's processing is power management. The architecture of the Zynq

UltraScale+ MPSoC device allows fine-grained control over power management. The power

domains illustrated in Figure 2-1, page 14 are part of this power management as is detailed

in Chapter 4, Power Considerations. Whenever you decide to run a given workload on a

given Zynq UltraScale+ MPSoC device block, keep in mind how this choice relates to your

power management needs. If, for example, a key algorithm runs on the APU and yet you

would like to have the APU be powered down during certain periods of time then you might

want to move that algorithm to either the PL or the RPU. The APU might be the most

powerful block in the system, as was explained earlier, but it also happens to be the one

that can consume the most power.

Heterogeneous Computing Concepts

Understanding the processing capabilities of the Zynq UltraScale+ MPSoC device and the

surrounding functionality calls on several key concepts not typically used outside the field

of heterogeneous computing. This is therefore a quick introduction to some terminology

you will find being used throughout this guide and the rest of the Zynq UltraScale+ MPSoC

device documentation.

The Zynq UltraScale+ MPSoC device includes two main layers of multi-processing

components (i.e. processors working in parallel to each other.) At the first layer, there are

the main processing blocks:

•APU

•RPU

•PL

•GPU

At the second layer, there are the processing units within those blocks:

• Dual or Quad Cortex-A53 cores within the APU

• Dual Cortex-R5 processor cores within the RPU

• PL-optimized applications and/or MicroBlaze™ processor instances within the PL

• Graphics processing pipelines in the GPU

The relationship between the main components of the Zynq UltraScale+ MPSoC device is

generally considered “asymmetrical.” That is, each of the APU, RPU, PL, and GPU have

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 19

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

different capabilities and constraints, they do not necessarily share a common OS, nor can

a workload be seamlessly moved between those blocks. Instead, designers who want to use

one of these components for a certain workload must tailor that workload specifically for

that component. This is what is called Asymmetric Multi-Processing (AMP).

Within the APU, the relationship between the Cortex-A53 processors can take four different

forms.

• If all the Cortex-A53 processor cores are used to run a single common OS such as Linux,

which is another recommended configuration, then they are said to have a

“symmetrical” relationship to one another. In this case, the common OS, Linux, can

dispatch and move workloads -- effectively OS processes -- between processors

transparently. From a software development point of view the OS API boundaries

guarantee that the software will operate just the same no matter which Cortex-A53

processor it runs on. This type of operation is known as Symmetric Multi-Processing

(SMP).

• If the Cortex-A53 processors are still operated independently, but a hypervisor such as

the open source Xen or various commercial offerings is used to coordinate their

combined operation, they would be considered as having a “supervised” asymmetrical

relationship to each other. That is, the hypervisor acts as a supervisor between the

Cortex-A53 processors and ensures there is a commonly-agreed upon arbitrator

between the independent software stacks running in parallel on the Cortex-A53

processors. Supervised AMP mode for the APU is one of the recommended

configurations in Chapter 3, System Software Considerations for certain types of

applications.

• The APU hardware should also permit a hybrid configuration. A hypervisor can be used

to segment the Cortex-A53 processors in supervised AMP mode while a subset of those

cores can be managed collectively by a single OS image in SMP mode. This however is

an advanced configuration that is neither provided nor supported by Xilinx.

• If the Cortex-A53 processors are operated independently, each running different

system software without a common OS or hypervisor between them, they too would be

considered as having an asymmetrical relationship to one another. More specifically,

they would be said to be running in “unsupervised” AMP mode, indicating that there is

no single software coordinating the operation of the Cortex-A53 processors. Note,

however, that due to the complexities of a supervised AMP configuration on the

Cortex-A53 processors, this is neither a recommended nor a Xilinx-supported

configuration for the APU, as is explained in Chapter 3, System Software

Considerations.

Finally, the APU hardware should also permit a hybrid configuration. A hypervisor can be

used to segment the Cortex-A53 processors in supervised AMP mode while a subset of

those cores can be managed collectively by a single OS image in SMP mode. This however

is an advanced configuration that is neither provided nor supported by Xilinx.

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 20

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

Overall, because the Zynq UltraScale+ MPSoC device combines many different types of

processors and processor cores in a single device, it is referred to as providing

“heterogeneous” computing. Being such a type of device, the Zynq UltraScale+ MPSoC

device enables the many processors and processor sets to relate to the other blocks or

processors within the same block in the various ways just described.

The following is a quick recap of the previous explanation:

• SMP: When processing cores within the APU are managed by a single OS

• AMP: When processing blocks operate independently of one another

°Supervised: When there's a hypervisor coordinating AMP blocks

°Unsupervised: When there isn't a single arbiter between AMP blocks

• Heterogeneous computing: combining different processor types in the same device

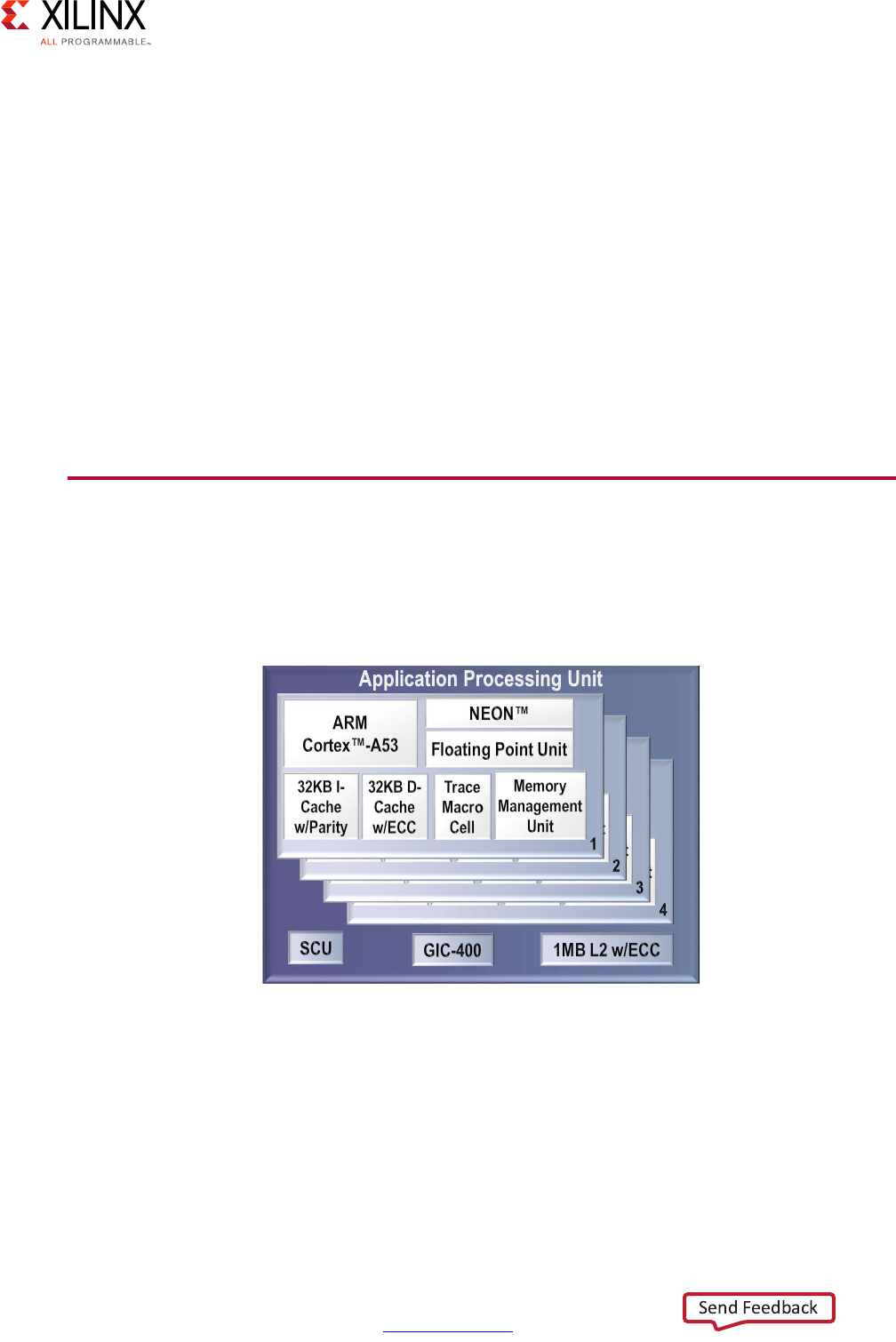

Application Processing Unit (APU)

The APU on the Zynq UltraScale+ MPSoC device includes Dual or Quad Cortex-A53

processors, depending on the specific Zynq UltraScale+ MPSoC device model you are

using.

Each of the Cortex-A53 processor cores provides, among many other features:

• ARMv8-A architecture support

• 64 or 32 bit operation

• Up to 1.5GHz performance

• Independent Memory Management Unit (MMU)

X-Ref Target - Figure 2-3

Figure 2-3: Application Processing Unit (APU) Block Diagram

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 21

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

• Dedicated L1 cache

• Individual power gating

• ARM TrustZone support

• VFPv4 FPU Implementation

• NEON and Crypto API support

Note: Refer to the Zynq UltraScale+ MPSoC device datasheet and Zynq UltraScale+ MPSoC Technical

Reference Manual (UG1085) [Ref 7] for the full list of features.

The Cortex-A53 processors therefore provide the same high-end general-purpose

computing capabilities required to run high-end general purpose applications and OSes as

other computing platforms as discussed in Chapter 3, System Software Considerations.

Note that while the Cortex-A53 processors are mostly independent, some of the APU's

resources, including its Global Interrupt Controller (GIC) covered below, must be managed

coherently for all Cortex-A53 processors in order for the APU to operate correctly.

Here is a more detailed view of the APU:

X-Ref Target - Figure 2-4

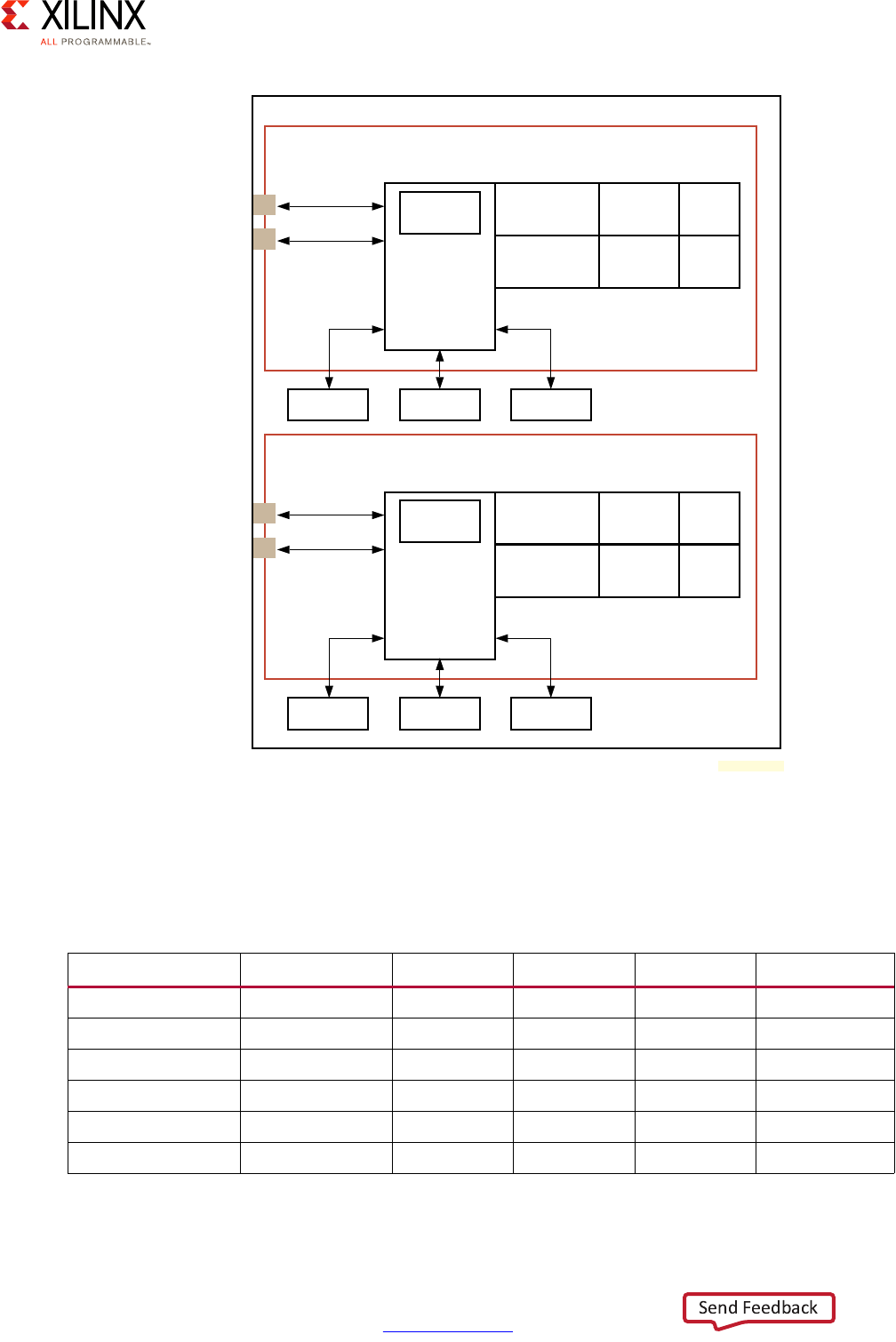

Figure 2-4: Detailed APU Block Diagram

APU

GIC

Cortex-A53 MPCore

Cortex-A53

FPU/NEON/Crypto

32K L1

ICache

32K L1

DCache

Debug/

Timers

Cortex-A53

FPU/NEON/Crypto

32K L1

ICache

32K L1

DCache

Debug/

Timers

Cortex-A53

FPU/NEON/Crypto

32K L1

ICache

32K L1

DCache

Debug/

Timers

Cortex-A53

FPU/NEON/Crypto

32K L1

ICache

32K L1

DCache

Debug/

Timers

Snoop Control Unit (SCU)

L2 Cache 1MB

IRQ/vIRQ

FIQ/vFIQ

Timers

Interrupts

System Counter

(in LPD) FPD Core Switch CoreSight

SPI Interrupts

64-bit counter

APB, TS

ATB

32-bit AXI

128-bit ACP

CCI

128-bit ACE

;

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 22

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

For more information regarding the software operation of the APU, refer to Chapter 3,

System Software Considerations and Zynq UltraScale+ MPSoC: Software Developers Guide

(UG1137) [Ref 5]. The rest of this section will focus on the APU's processing capabilities.

SMP on the APU

Xilinx provides an open source Linux that contains a kernel that is SMP aware and can be

further configured to the developer's needs. The kernel configuration allows the developer

to specify the number of CPU cores that can be used for the OS' SMP feature. Selecting a

number below the amount of available cores, and deploying with an underlying hypervisor

will keep one or more cores free for other applications such as custom bare-metal

applications created through the Xilinx SDK.

Unsupervised AMP on the APU

Unsupervised AMP on the APU implies handing control over to the first processor, which in

turn boots specific OS and runs workloads on other processors. In this working mode,

resource sharing is left for the developer to implement. As was mentioned earlier, due to

subtle complexities of implementation, this is neither a Xilinx recommended nor supported

use case of the Zynq UltraScale+ MPSoC device.

Supervised AMP on the APU

A hypervisor can be used on the APU for deploying different OSes or bare-metal workloads

on the available cores. Depending on the hypervisor itself and specific customer needs, it

can typically be used to manage resource sharing in a way that is either transparent (full

virtualization) or semi-transparent (paravirtualization) to the guests it runs. Virtualization

support is the subject of the next section.

64 or 32 Bit Operations

The Cortex-A53 processor is compatible with the ARMv8 specification which means it has

the capacity to operate in 64 bit (AArch64) and 32 bit (AArch32) execution modes. The

limitations of each mode are the same as those inherent the ARM architecture. The AArch32

execution mode on the Zynq® UltraScale+™ MPSoC is compatible with the Zynq 7000

device family and the ARMv7 specification, and has been extended to support some of

ARMv8 features like SIMD and the cryptographic extension. The choice of using either

execution mode generally depends on the software meant to run on the device.

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 23

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

In hypervisor mode, the choice of whether to use AArch32 and AArch64 is dictated by what

execution state is used by the hypervisor.

• A 64 bit hypervisor can run an operating system kernel running in AArch64 or AArch32

mode. Note that an OS kernel running as AArch32 can only run applications in AArch32

execution mode, even if said kernel is running within a hypervisor in AArch64 mode.

• A 32 bit hypervisor is limited to AArch32 OSes and applications.

APU Virtualization Support

The Zynq UltraScale+ MPSoC device supports hardware virtualization through 4 key

components. Several are already being used by system software designed for the Zynq

UltraScale+ MPSoC device, such as the Xen hypervisor. Understanding those capabilities

will enable you to more effectively design your system around the Zynq UltraScale+ MPSoC

device.

Cortex-A53 Processor Virtualization

Support for virtualization on the APU is typically implemented through one of the Exception

Levels (ELs) defined in the ARMv8 architecture specification of the Cortex-A53 processor.

There are 4 ELs supported by ARMv8 and EL2 can be used by supported hypervisors to

isolate the hypervisor context from the guest OSes. The Cortex-A53 processor's ELs are

discussed in detail as part of the ARM TrustZone section in Chapter 7, Resource Isolation

and Partitioning.

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 24

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

Interrupt Virtualization

Interrupt virtualization is discussed in APU Interrupt Virtualization, page 39.

X-Ref Target - Figure 2-5

Figure 2-5: TrustZone's Exception Levels

Non-secure State

App1

AArch64 or

AArch32(1)

App2

AArch64 or

AArch32(1)

App1

AArch64 or

AArch32(1)

App2

AArch64 or

AArch32(1)

Supervisor (Guest OS1)

AArch64 or AArch32(2)

Supervisor (Guest OS2)

AArch64 or AArch32(2)

Hypervisor Mode

AArch64 or AArch32

EL0

EL1

EL2

SVC

HVC

SMC

Secure Monitor Mode

Supervisor (Secure OS)

AArch64 or AArch32

Trusted App1

AArch64 or

AArch32(1)

Trusted App2

AArch64 or

AArch32(1)

EL3

Secure State

X15288-032917

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 25

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

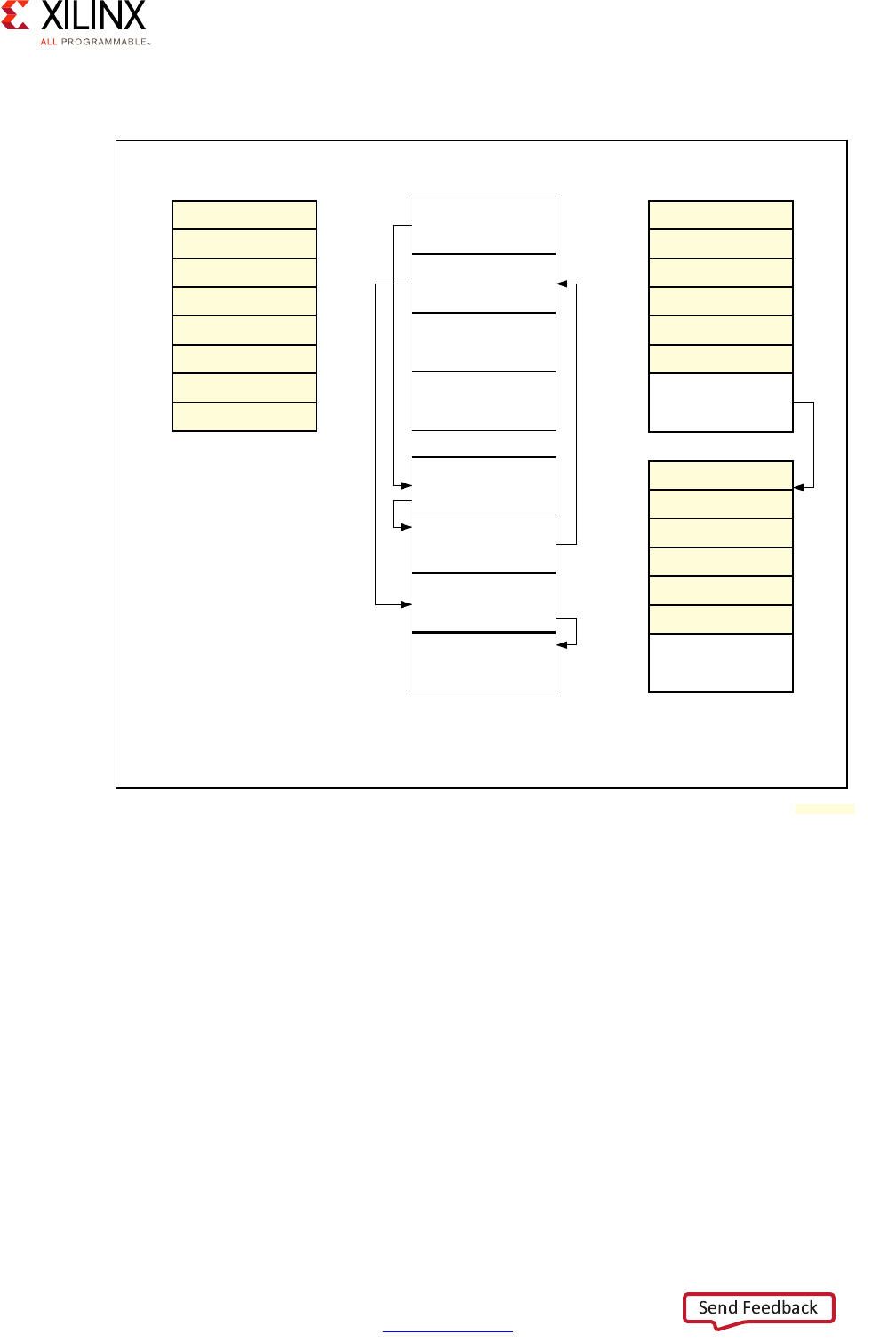

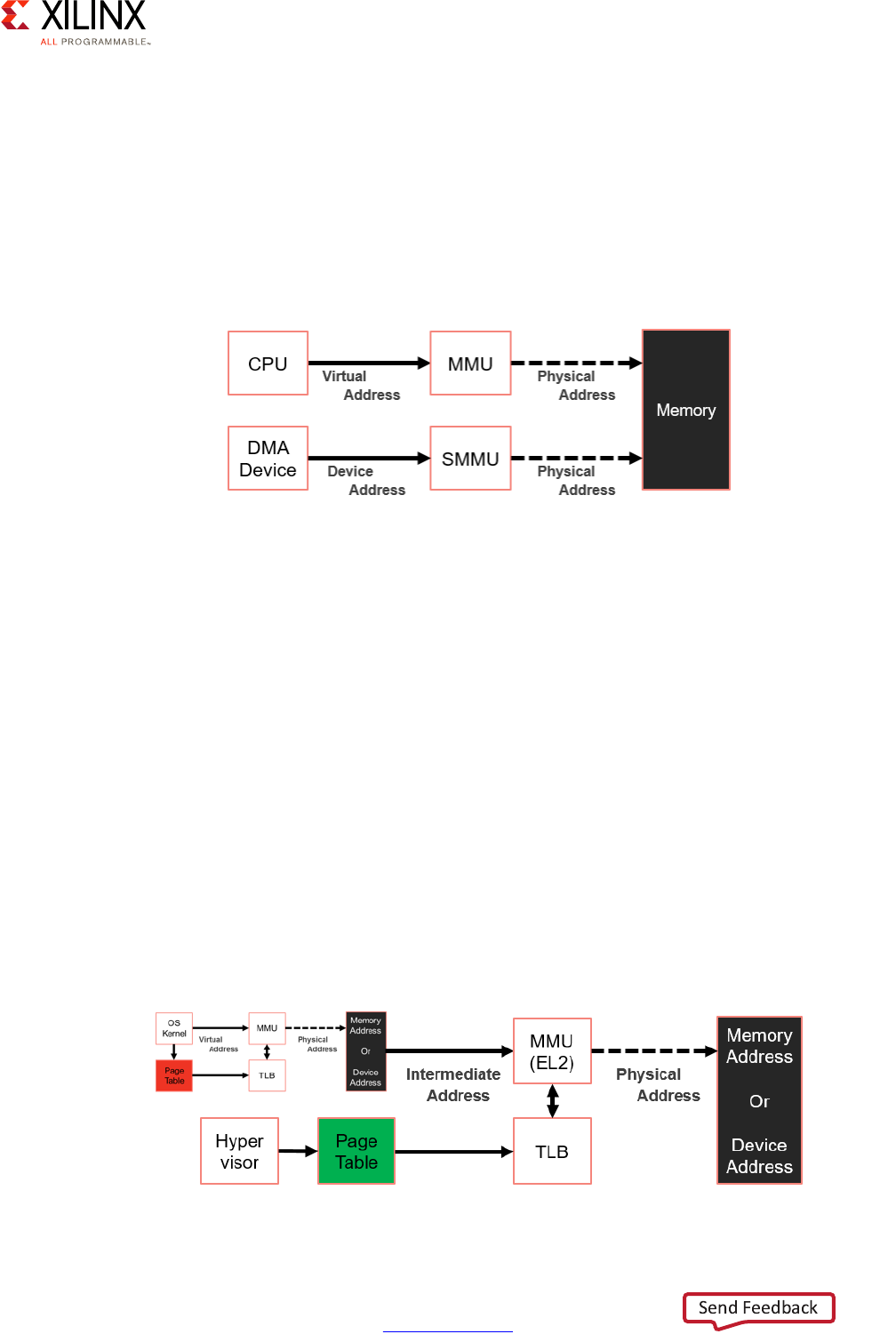

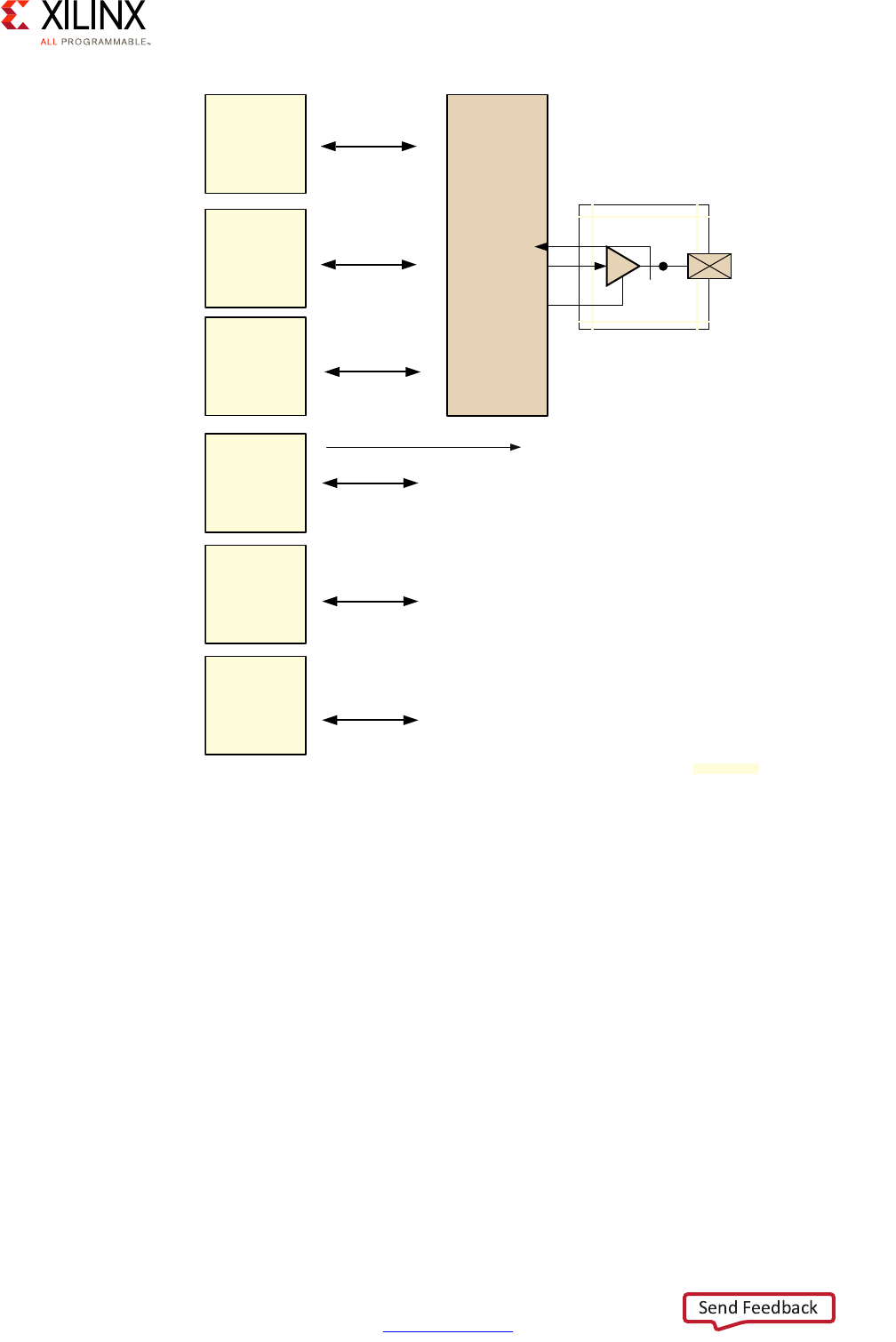

System MMU for I/O Virtualization

The System MMU (SMMU) simplifies the virtualization of addresses for I/O and hypervisor

use by automating address translation based on software-managed tables. The following

figure illustrates one example of the SMMU's virtualization of addresses:

The SMMU can operate in two stages, as illustrated above as "S1," "S2," or "S1/S2:”

•Stage 1:

This stage operates like a traditional single-stage CPU MMU. It takes Virtual Addresses

(VAs) and translates them to Intermediate Physical Addresses (IPAs).

•Stage 2:

In a hypervisor environment, this stage simplifies the hypervisor's design by enabling

guest OSes to directly configure DMA capable devices in the system without having to

interface with the hypervisor for those requests. In this stage, it takes IPAs and converts

to Physical Addresses (PAs).

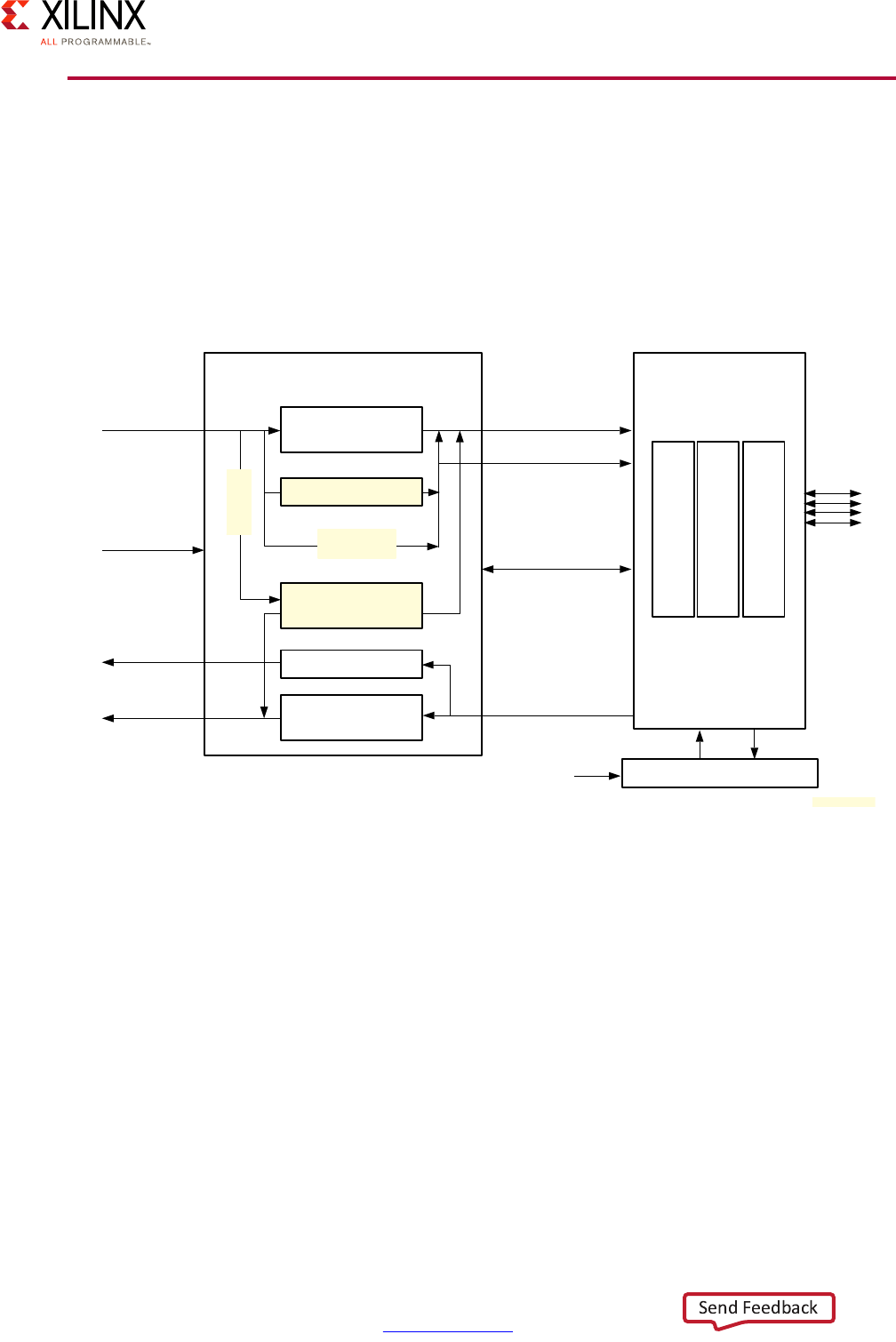

X-Ref Target - Figure 2-6

Figure 2-6: Example Use of the SMMU

Cache Coherent Interconnect

Memory

Device1 Device2

Interconnect

Device3 Device<n>

Interconnect

SMMU S2 SMMU S1/2 SMMU S1/2

CPU0 CPU1-3

MMU S1/2

L1 Cache

MMU S1/2

L1 Cache

GPU

MMU S1

Cache

Coherent Interconnect

L2 Cache

X15290-092916

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 26

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

The following figure illustrates the SMMU's virtualization of addresses in a hypervisor

environment:

The SMMU has the following key components that interface with the Zynq UltraScale+

MPSoC device Interconnect described in the next section:

• Translation Buffer Unit (TBU): For translating addresses

• Translation Control Unit (TCU): For controlling and managing address translation

Chapter 7, Resource Isolation and Partitioning discusses this topic further.

Peripheral Virtualization

In general, the SMMU can be used by the hypervisor to allow guests to exclusively own a

DMA-capable device. In system designs that wish to share a single hardware device across

multiple guest OSes, a technique called paravirtualization is used. This requires new device

drivers for all OSes that want to share the device. The throughput of a shared device is

accordingly less than an exclusively-owned device, as mapped by the SMMU.

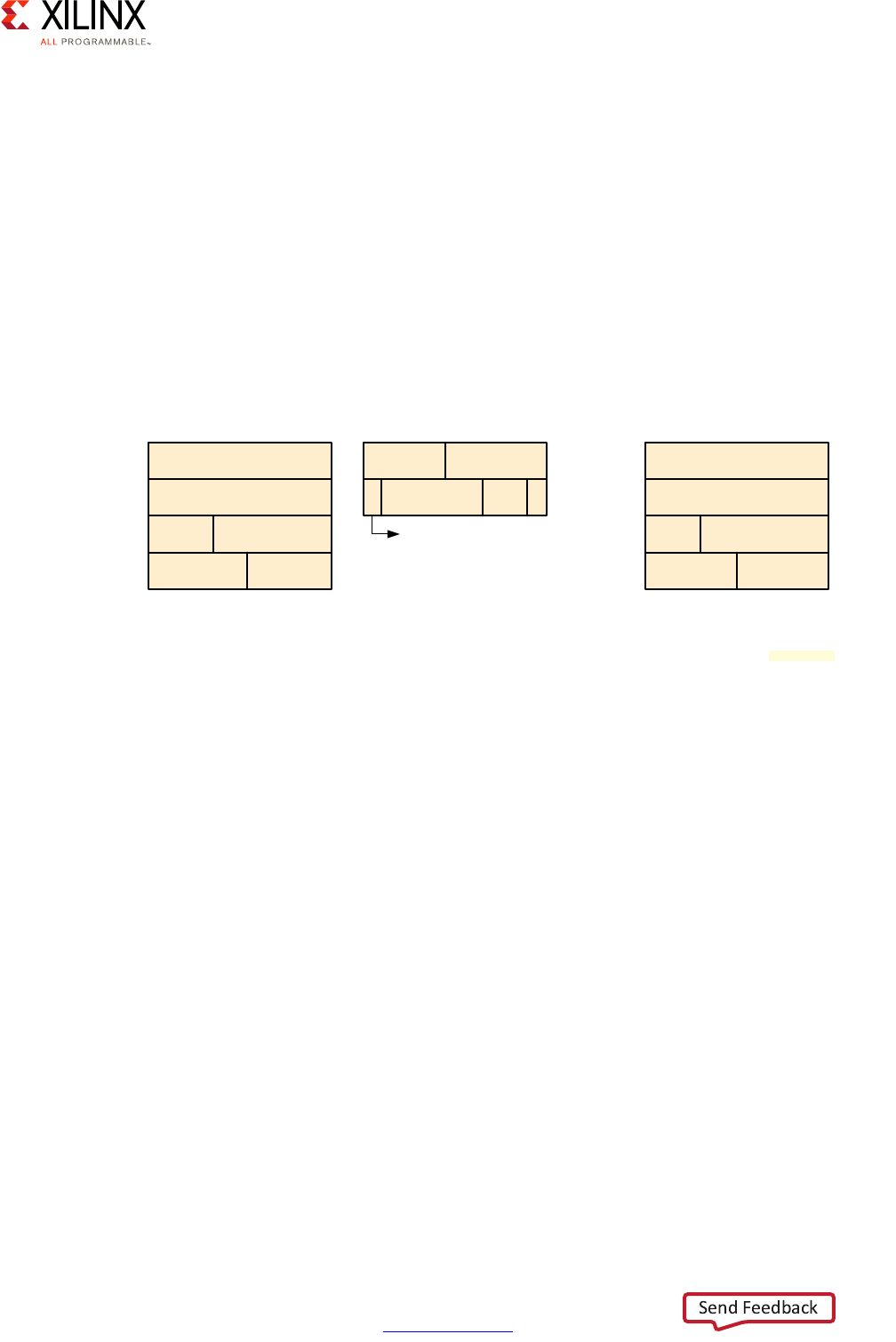

X-Ref Target - Figure 2-7

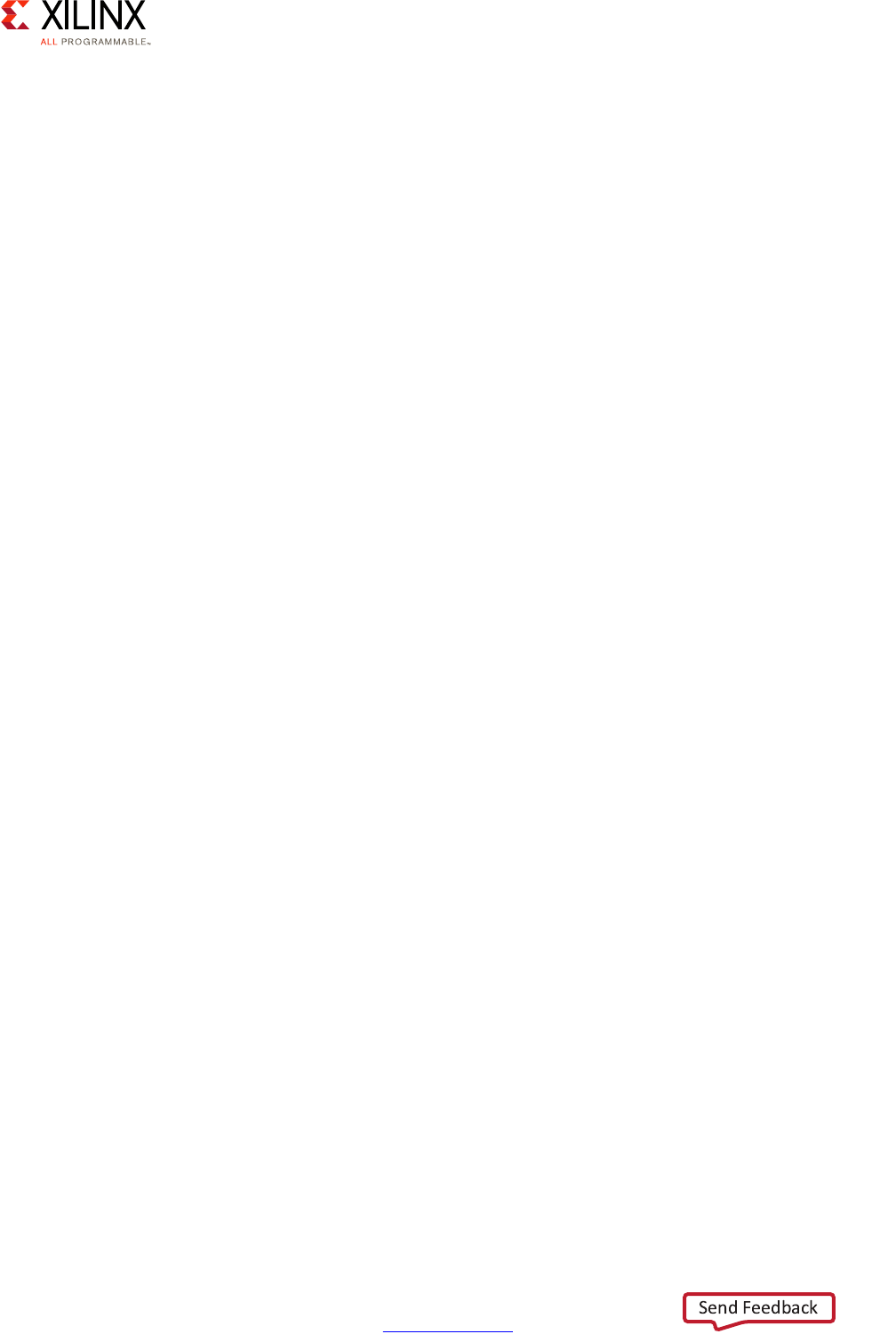

Figure 2-7: SMMU Address Translation Stages

B2

A0A1

Guest OS0

B0B1

Guest OS0

Hypervisor

CPU

MMU

Memory

Accessing

Devices

System Memory

SystemMMU

B2

Guest OSm

4GB

B2

B2

0

4GB

Stoge 1 Address Translation

Under control

of Guest OS

A2

A2

Guest OSm

4GB

A2

A2

0

Stage 1 Address Translation

Intermediate Physical

Address (IPA) Space

Virtual Address

(VA) Space

4GB

B2

4GB

A2

B2

A2

Stage 2 Address Translation

Physical Address

(PA) Space

0

0

X15291-032917

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 27

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

Timer Virtualization

The ARM processor includes generic hardware timers for various tasks. One timer calculates

the global passing of time for the system. This generic timer is associated with a counter

incremented at a rate which depends on the system setting or the CPU frequency. Each CPU

core contains a physical counter which contains the system counter value. Each CPU core

also has a virtual counter that indicates virtual time. This virtual counter is saved and

paused when a virtual machine gets interrupted and the control returns to the hypervisor.

Access to the counter values can be controlled by the OS depending on the execution

levels.

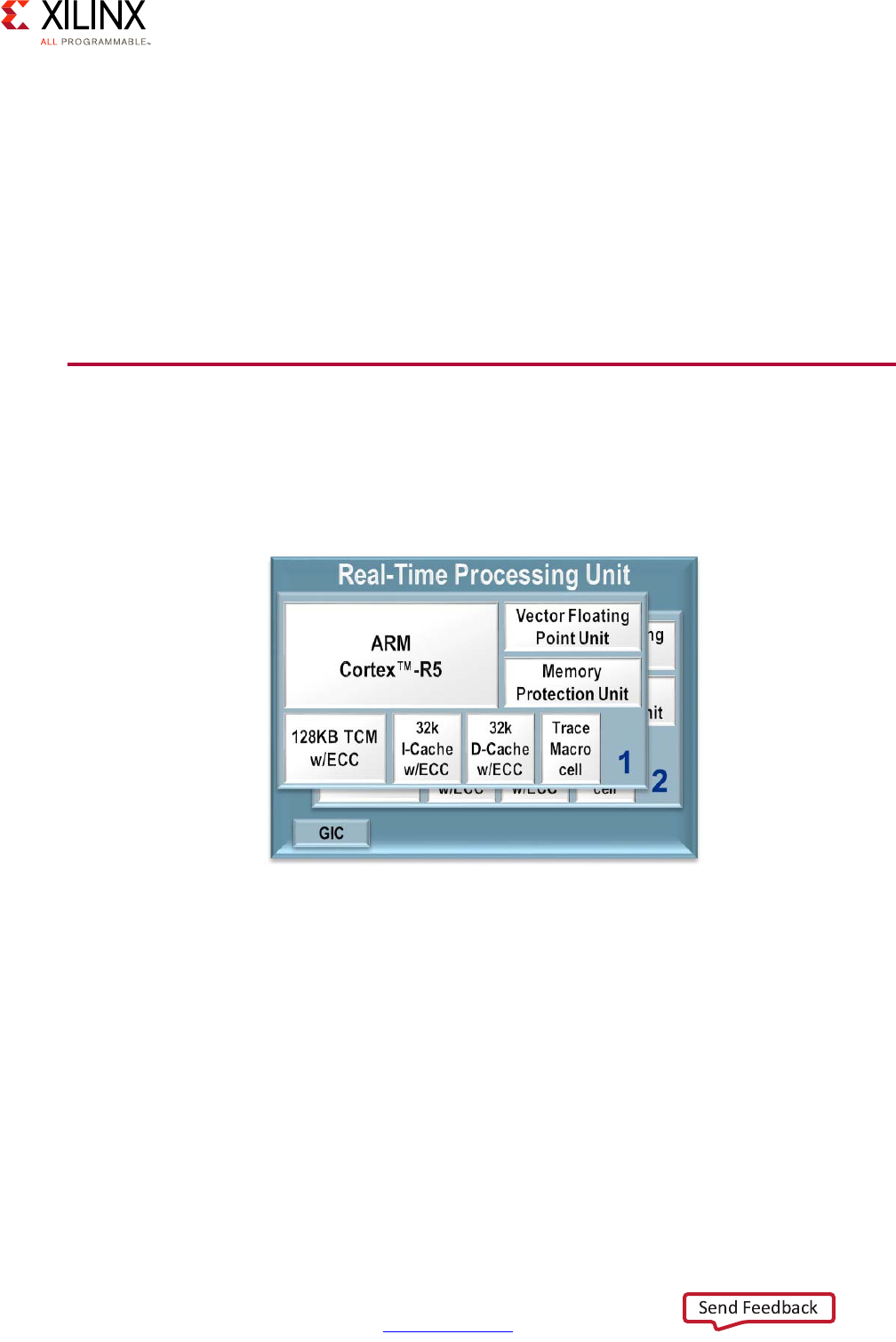

Real-Time Processing Unit (RPU)

The Zynq® UltraScale+™ MPSoC comes equipped with two Cortex-R5 processors which are

typically used for operations requiring deterministic low-latency operations and

response-time critical applications:

Each Cortex-R5 processor provides, among other features:

• ARMv7-R architecture support

•32-bit operation

• Up to 600MHz performance

• Dedicated L1 cache

• 128KB of Tightly-Coupled Memory (TCM) with error-correcting code (ECC)

• Single and double-precision FPU

X-Ref Target - Figure 2-8

Figure 2-8: Real-Time Processing Unit (RPU) Block Diagram

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 28

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

Note that like the case of the APU, while the Cortex-R5 processors can be operated

independently, some of the resources of the RPU, including the Global Interrupt Controller

discussed further below, must be managed coherently for both Cortex-R5 processors in

order for the RPU to operate correctly.

The Cortex-R5 processors can be set to operate in two different modes depending on your

needs:

• Split Mode / Unsupervised AMP:

Also known as the Performance Mode, this is the default mode of the Cortex-R5

processors. In this mode, each core operates independently, except for the interrupt

controller as was just explained. In split mode, one core may be running an RTOS while

another could be running bare-metal, or both could be running different RTOSes. Unlike

the APU, all such configurations in this mode would be considered as unsupervised AMP

as the Cortex-R5 processor cannot support a hypervisor. Communication between the

Cortex-R5 processors could be done by simply passing interrupts and sharing memory

with Xilinx bare-metal libraries or enabling a range of advanced features by means of

advanced features available in OpenAMP framework. Refer to the Zynq UltraScale+

MPSoC OpenAMP: Getting Started Guide (UG1186) [Ref 8] for more information.

•Lock-Step Mode:

Also known as the Safety Mode, in this mode of operation the Cortex-R5 processors acts

as a single CPU with regards to the rest of the system. Internally, however, the cores are

processing the same instruction in parallel; delayed by 1 ½ clock cycles to allow

detection of single event upsets. Should output from the two cores differ, the

comparison and synchronization logic would detect and signal the error for a

subsequent, custom response. For example tampering could cause the lock-step cores

to get out of sync and, in response to this, you could decide to shut down or lock out the

system. The following diagram illustrates the operation of the RPU in this mode:

X-Ref Target - Figure 2-9

Figure 2-9: Lock-Step of the Cortex-R5 Processors

TCMs Associated

with CPU1

TCM A

TCM B

TCMs Associated

with CPU0

TCM A

TCM B

ShimShim

GIC

Cortex-R5

CPU0

Cortex-R5

CPU1

Caches Associated

with CPU0

D-Cache

I-Cache

Comparison and Synchronization Logic

X15295-092916

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 29

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

If your application is mission critical or if you require functional safety with detection of

single event upsets, then lock-step mode is likely preferable. If, on the other hand, you

would like to benefit from the full performance made possibly by having two Cortex-R5

processors available for your application, the default split mode is best.

As is explained in Chapter 4, Power Considerations, the Cortex-R5 processor are part of

what is known as a “power island” and can be gated together. They cannot, however, be

power gated individually.

The RPU is discussed in more detail in the Real-Time chapter.

Interconnect

The Zynq UltraScale+ MPSoC device's Interconnect is at the heart of its heterogeneous

architecture. It links together all of the processing blocks together and enables them to

interface with the outside world through access peripherals, devices and memory. It's

therefore fundamental to understand its functionality in order to best tune your system.

The Zynq UltraScale+ MPSoC device's interconnect is based on ARM's Advanced eXtensible

Interface (AXI) defined as part of ARM's Advanced Microcontroller Bus Architecture (AMBA)

4.0 specification, and incorporates many other related ARM technologies such as Cache

Coherent Interconnect (CCI-400) and CoreLink NIC-400 Network Interconnect. ARM

describes those technologies in great detail in the corresponding specifications and

documentation it makes available. For the purposes of the present discussion, however, a

brief introduction to the relevant core concepts will prove helpful in determining how to

tweak the Zynq UltraScale+ MPSoC device's interconnect to your needs.

AXI Interfaces

The primary mechanism for linking any pair of blocks within the Zynq UltraScale+ MPSoC

device is an AXI interface. At its most basic level, an AXI interface is specified as linking an

AXI Master to one or more AXI Slaves. The master issues the requests that the slave(s) needs

to fulfill. Each AXI interface consists of five different channels:

• Read Address Channel

• Write Address Channel

• Read Data Channel

• Write Data Channel

• Write Response Channel

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 30

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

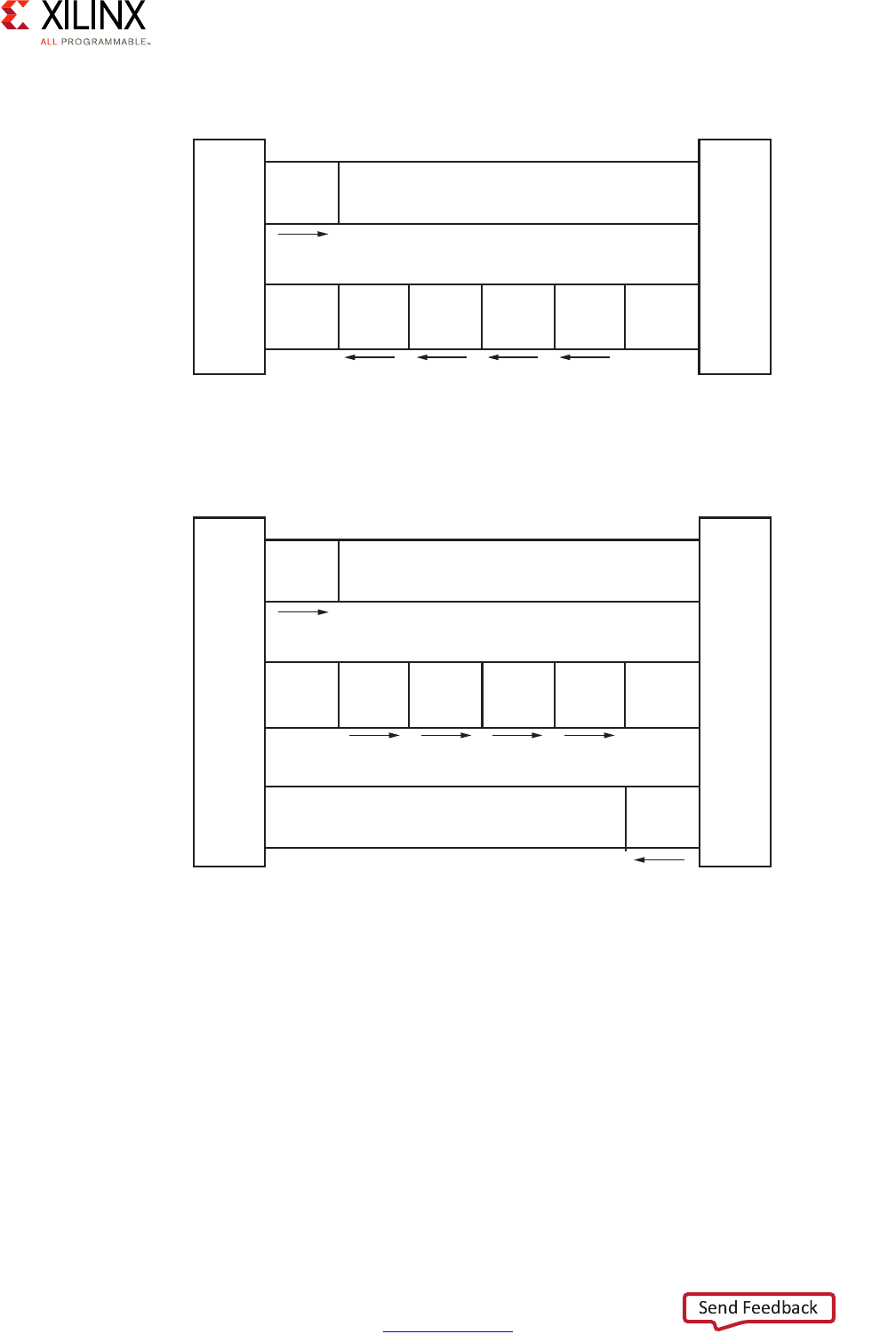

The following figures summarize the interaction between master and slave.

Each Zynq UltraScale+ MPSoC device block can contain many interface masters and slaves.

Given the number of components in the Zynq UltraScale+ MPSoC device and the complex

relationships between them, masters and slaves are rarely connected directly. Instead,

several switches are located at strategic points in the Zynq UltraScale+ MPSoC device to

enable the various blocks to connect to one another while keeping with the Zynq

UltraScale+ MPSoC device's emphasis on power management, security, isolation, and

overall flexibility.

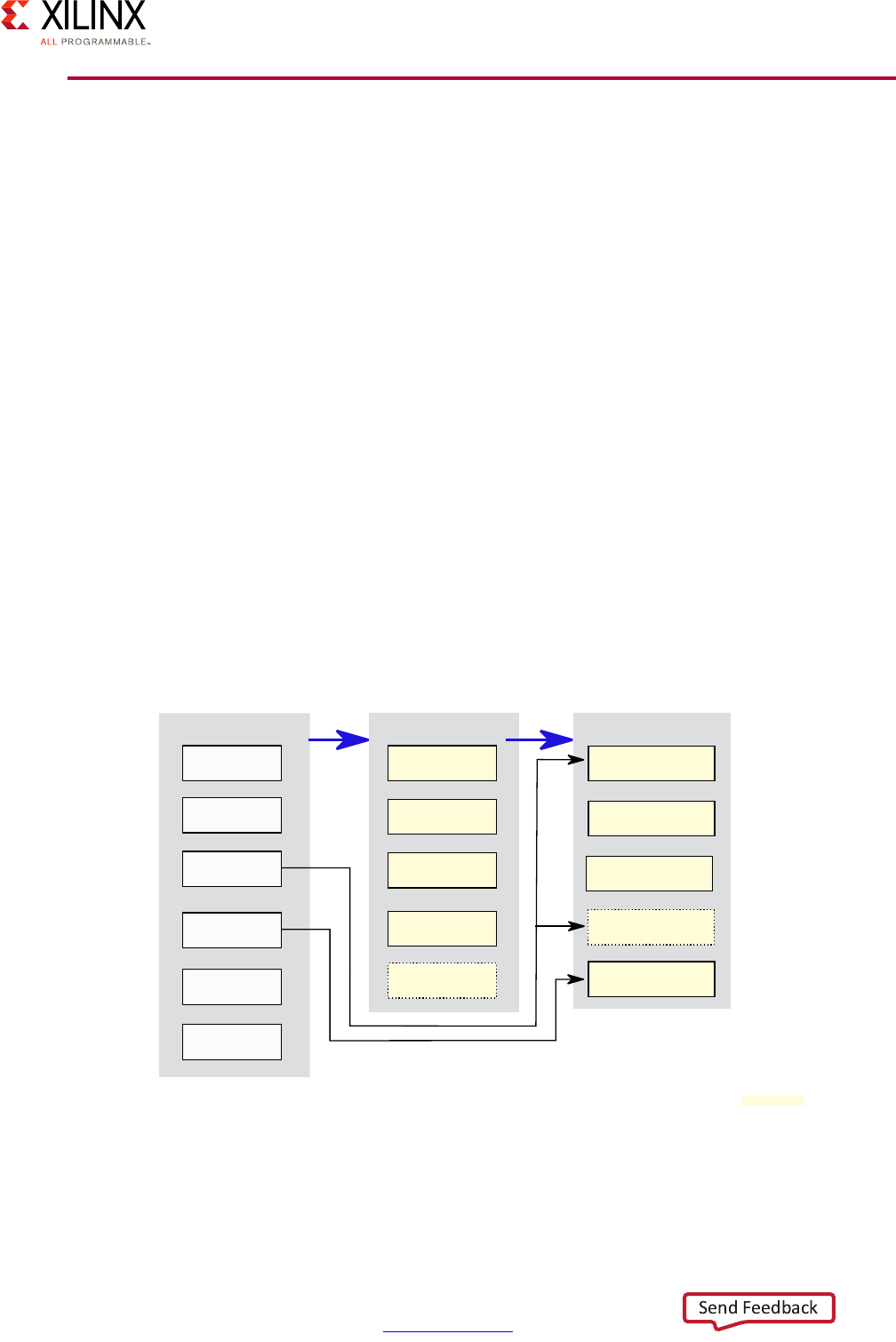

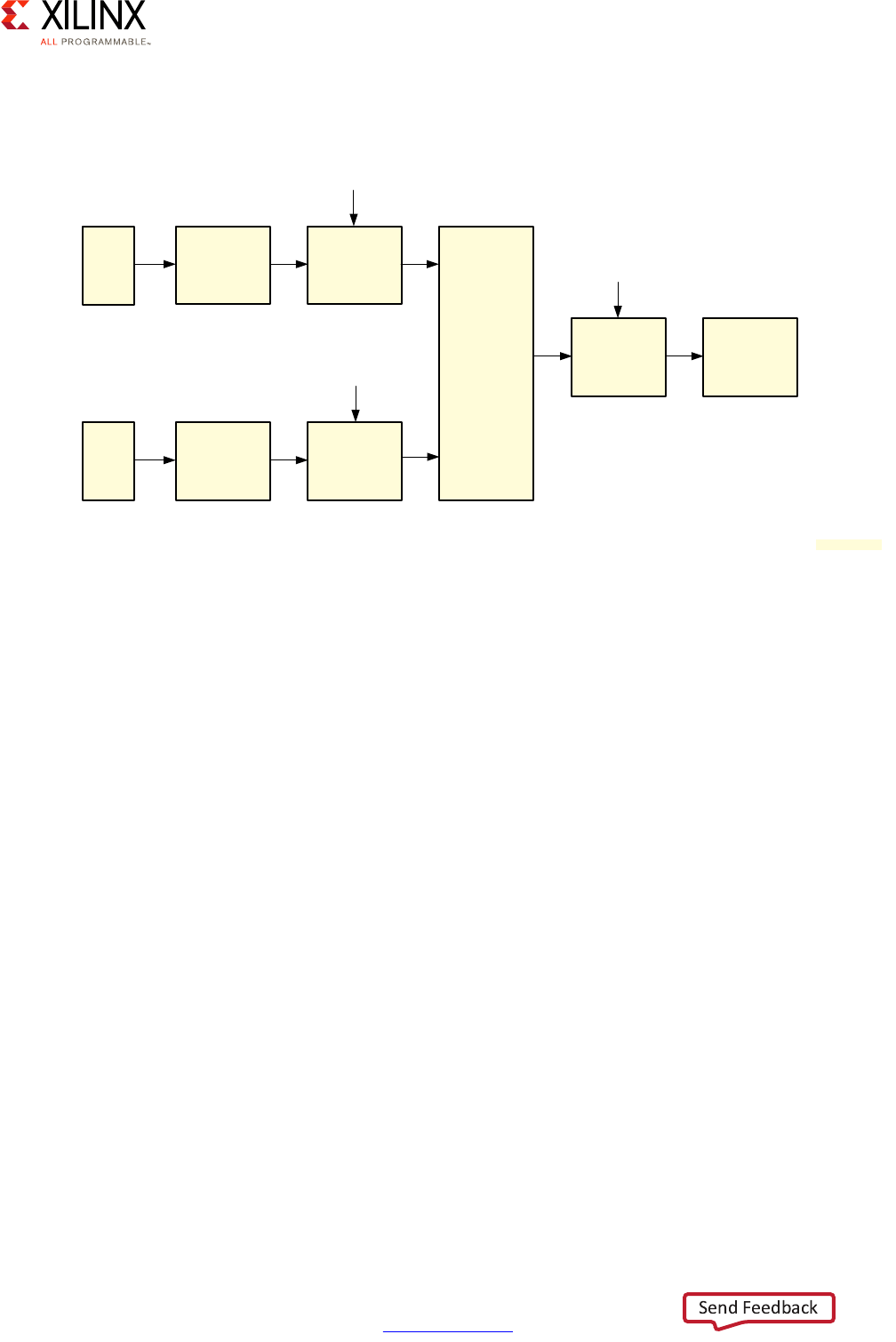

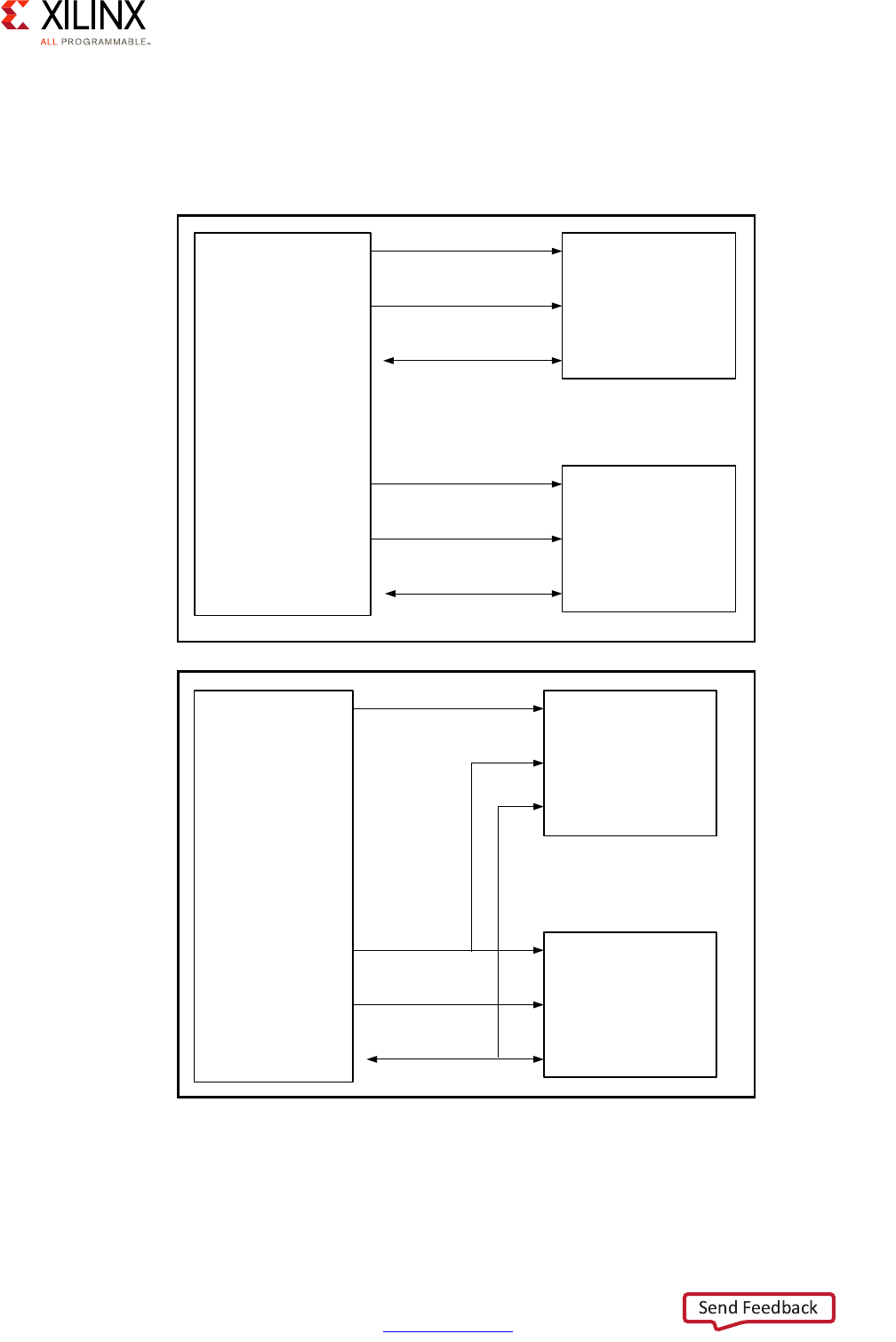

X-Ref Target - Figure 2-10

Figure 2-10: AXI Master and Slave Interaction, Read Channels

X-Ref Target - Figure 2-11

Figure 2-11: AXI Master and Slave Interaction, Write Channels

Master

interface

Read address channel

Address

and

control

Read data channel

Read

data

Read

data

Read

data

Read

data

Slave

interface

X12076

Master

interface

Write address channel

Address

and

control

Write data channel

Write

data

Write

data

Write

data

Write

data

Write

response

Write response channel

Slave

interface

X12077

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 31

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

Traffic Priority and Coherency

There is significant parallel traffic occurring at any point in time in the Zynq UltraScale+

MPSoC device. Different processing blocks and resources have however different priorities

while still many parties on the interconnect are accessing the memory simultaneously.

There must therefore be a way to prioritize traffic and, at the same time, preserve the

coherency of traffic going to the memory.

With regards to traffic, not all of it coming in and out of the interconnect switches is given

the same level of priority. Instead, AXI traffic within the Zynq UltraScale+ MPSoC device's

interconnect falls under one of three categories:

• Low Latency (High Priority)

This type of traffic generally needs to be prioritized over other types of traffic. Such is

the case for traffic between the APU and RPU, and the memory.

• High Throughput (Best Effort)

This type of traffic can tolerate higher latencies, but must have very high throughput.

Such is the case of GPU and the PL.

• Isochronous (Video class)

This type of traffic is mostly tolerant of long latencies, except at some critical moments.

Such is the case of video/image data in general. When timeouts are about to expire, this

class of traffic is given the highest priority.

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 32

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

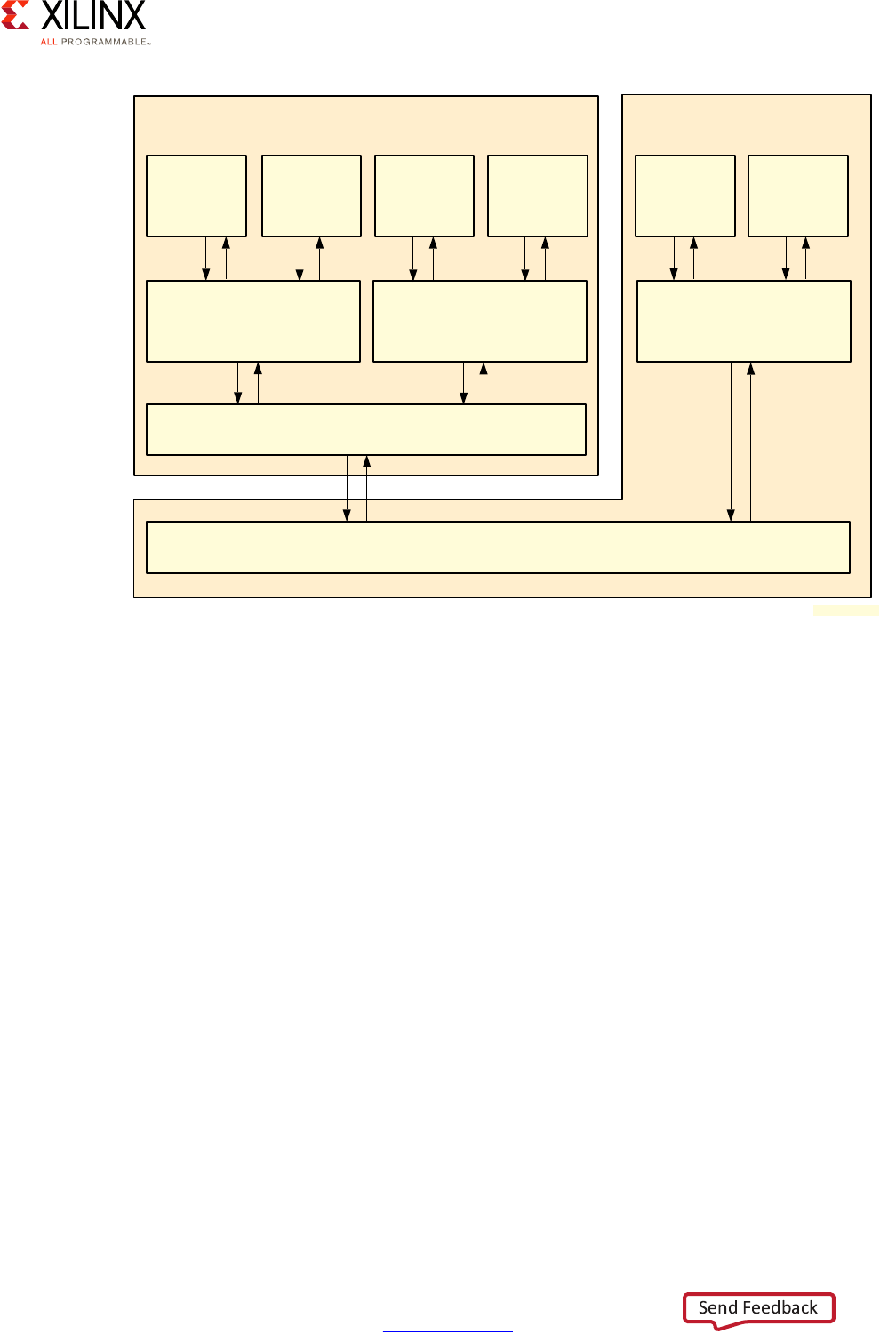

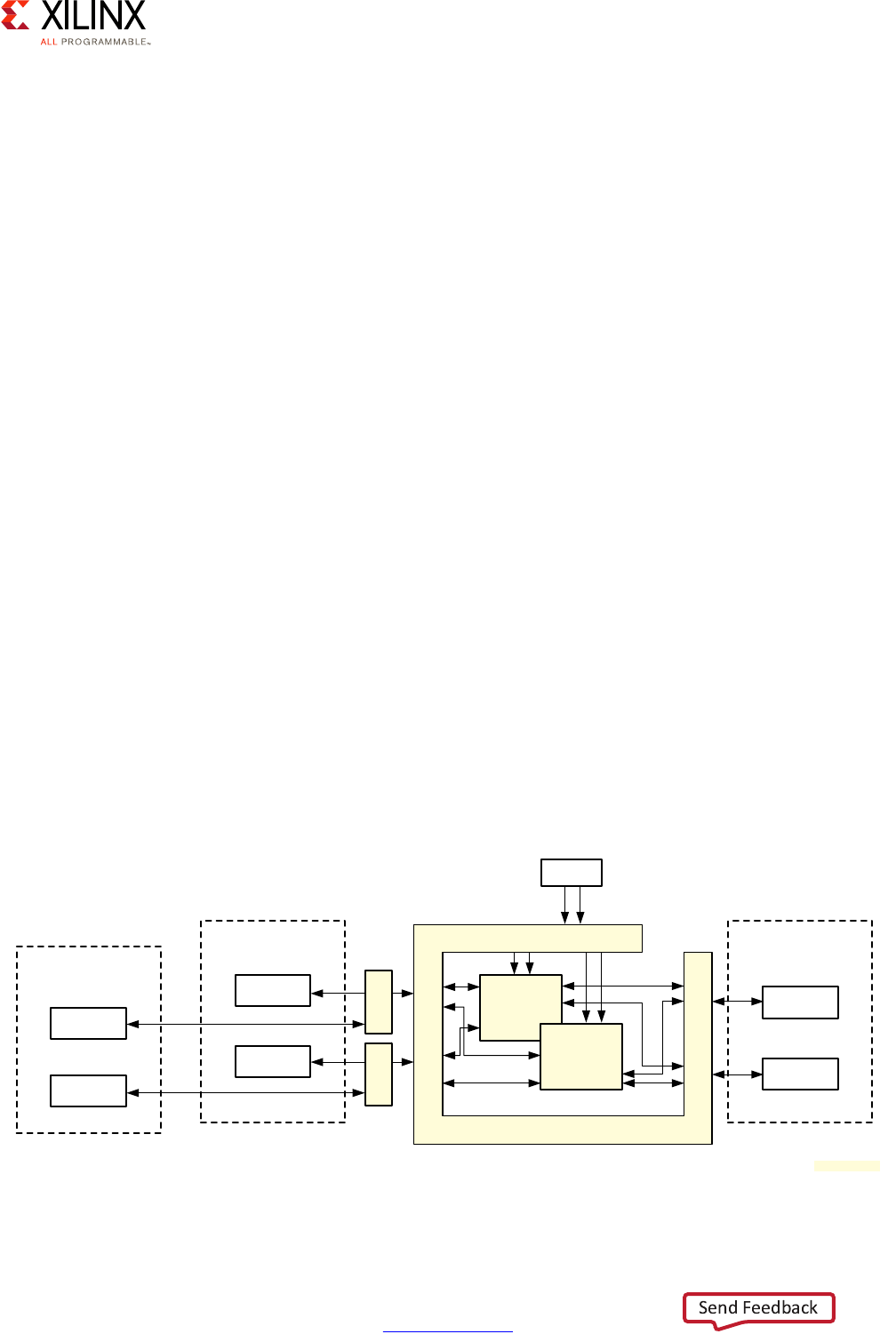

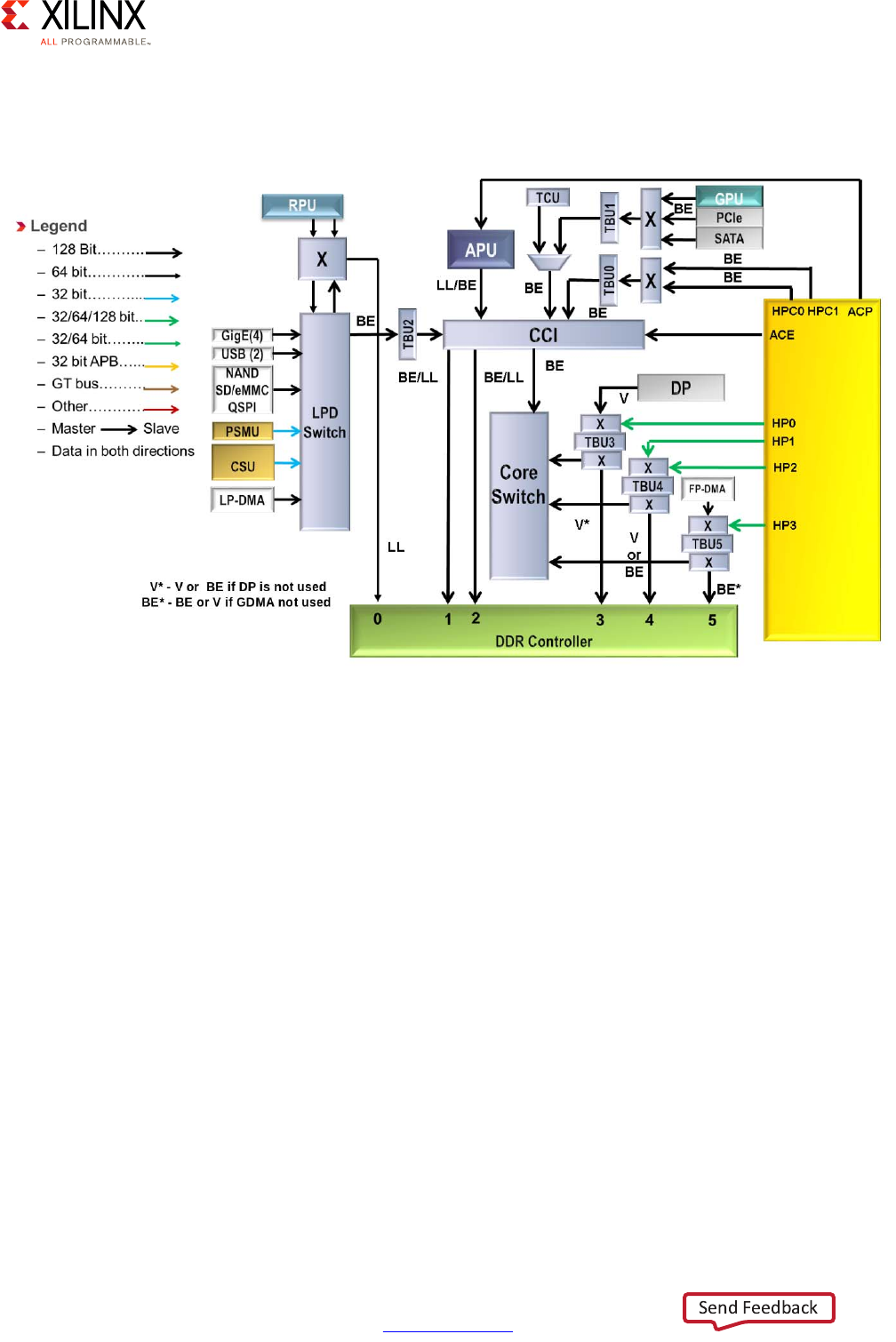

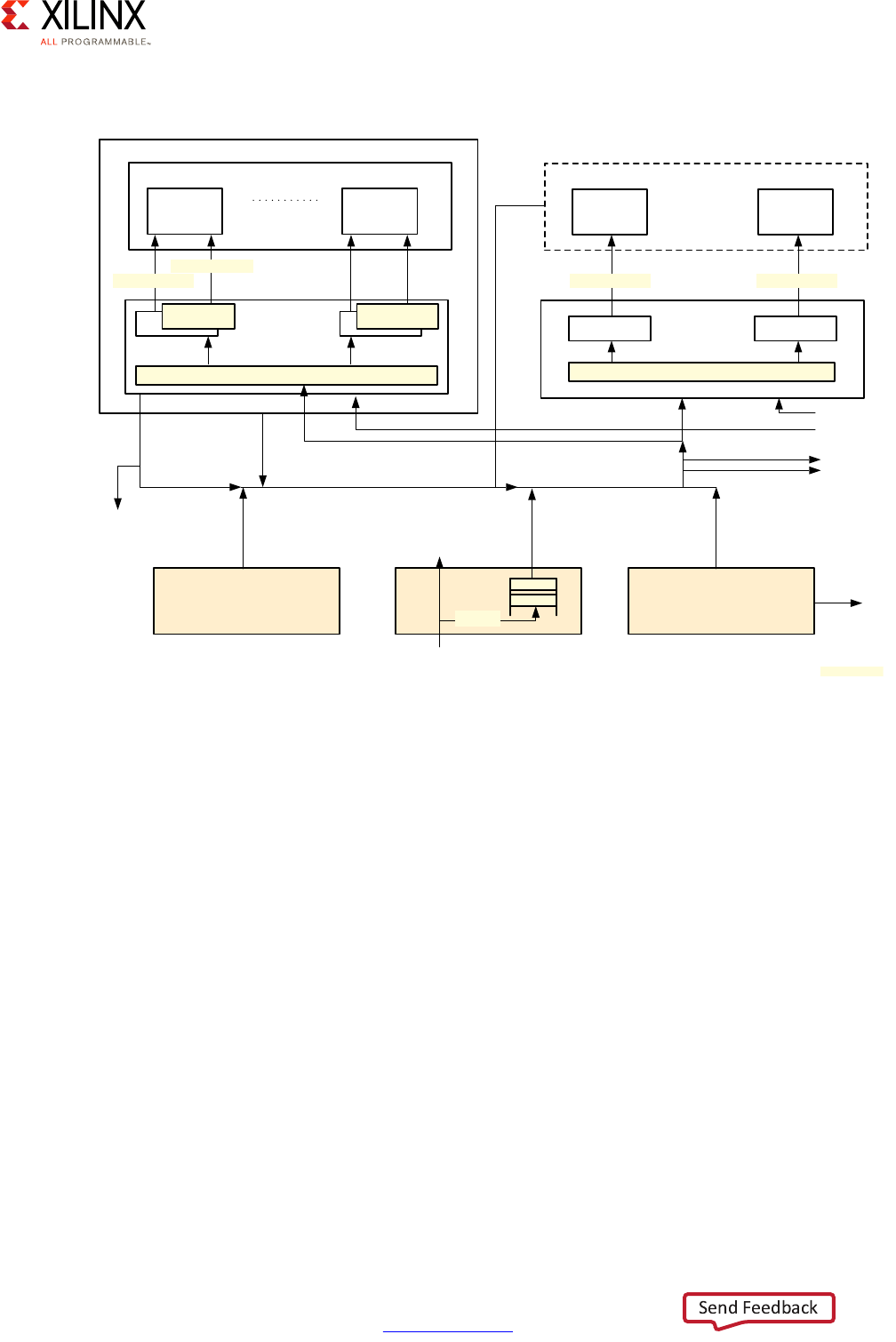

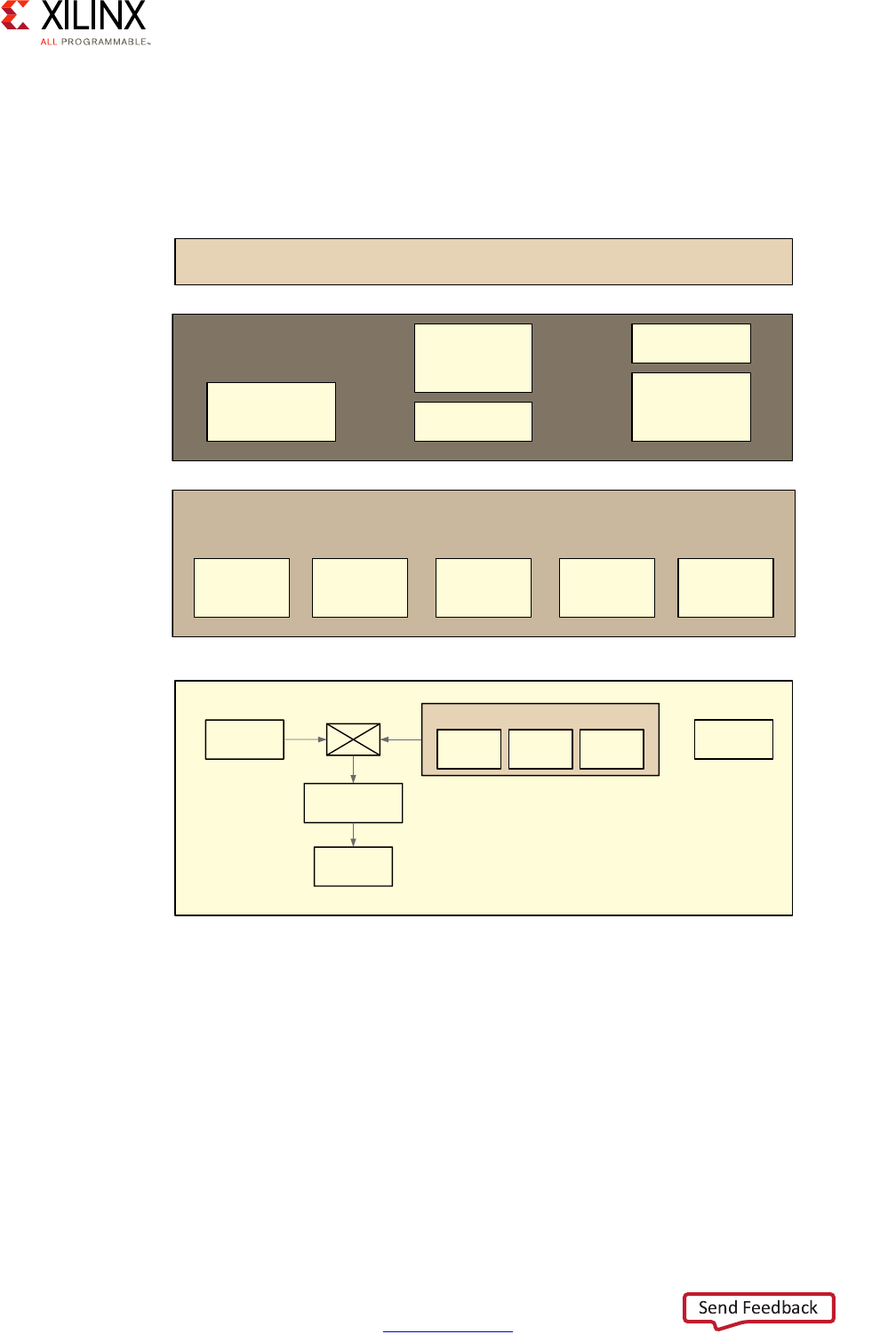

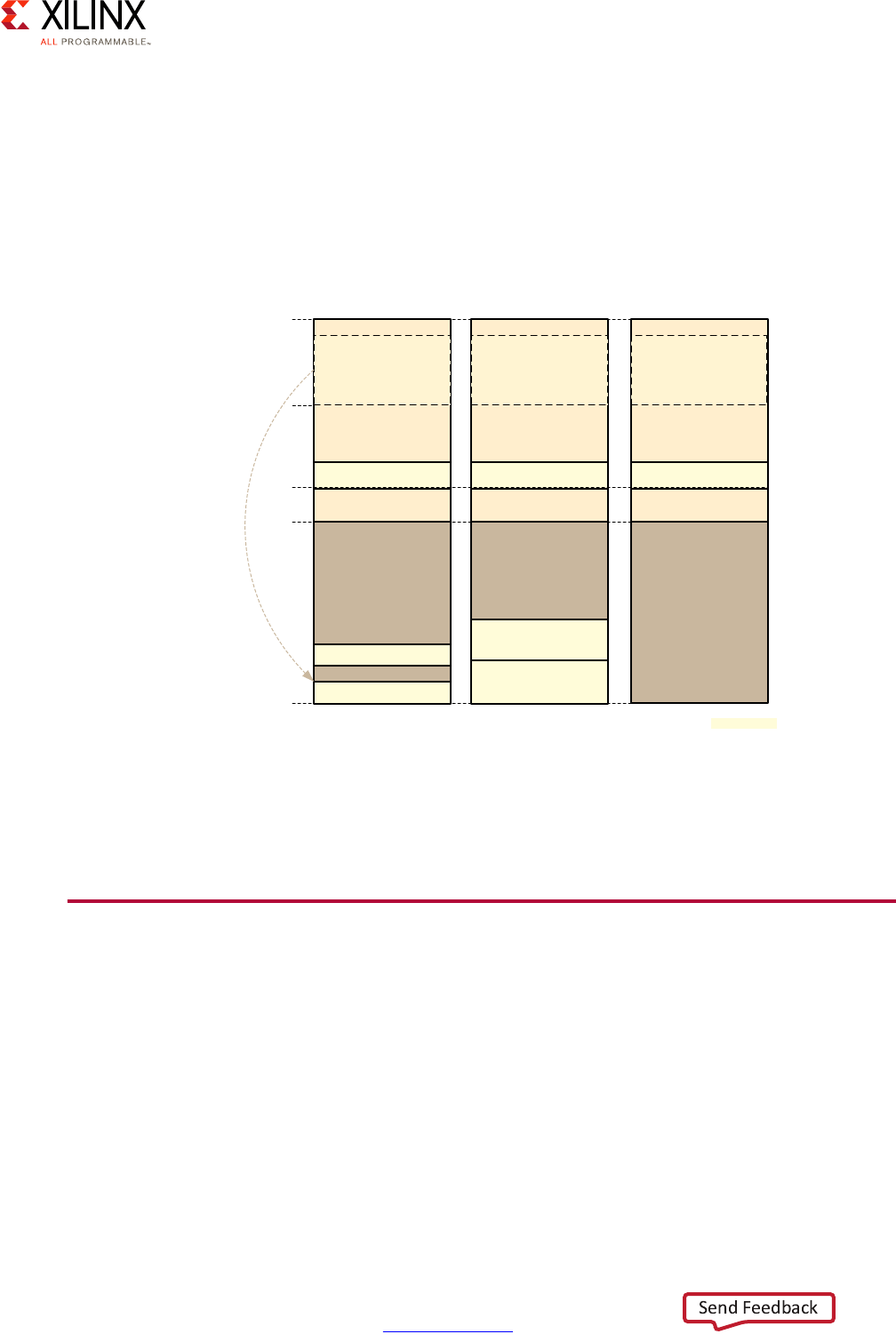

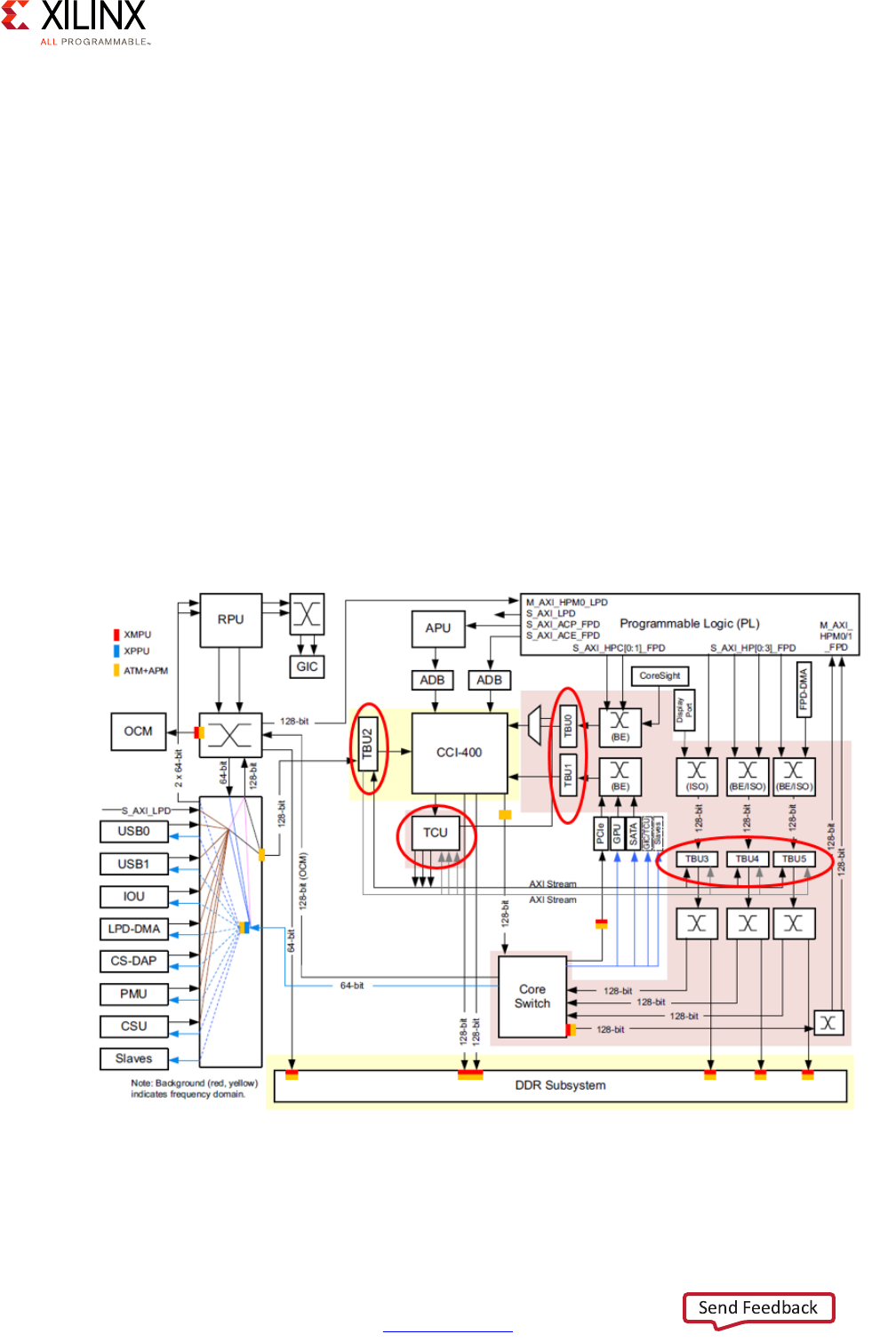

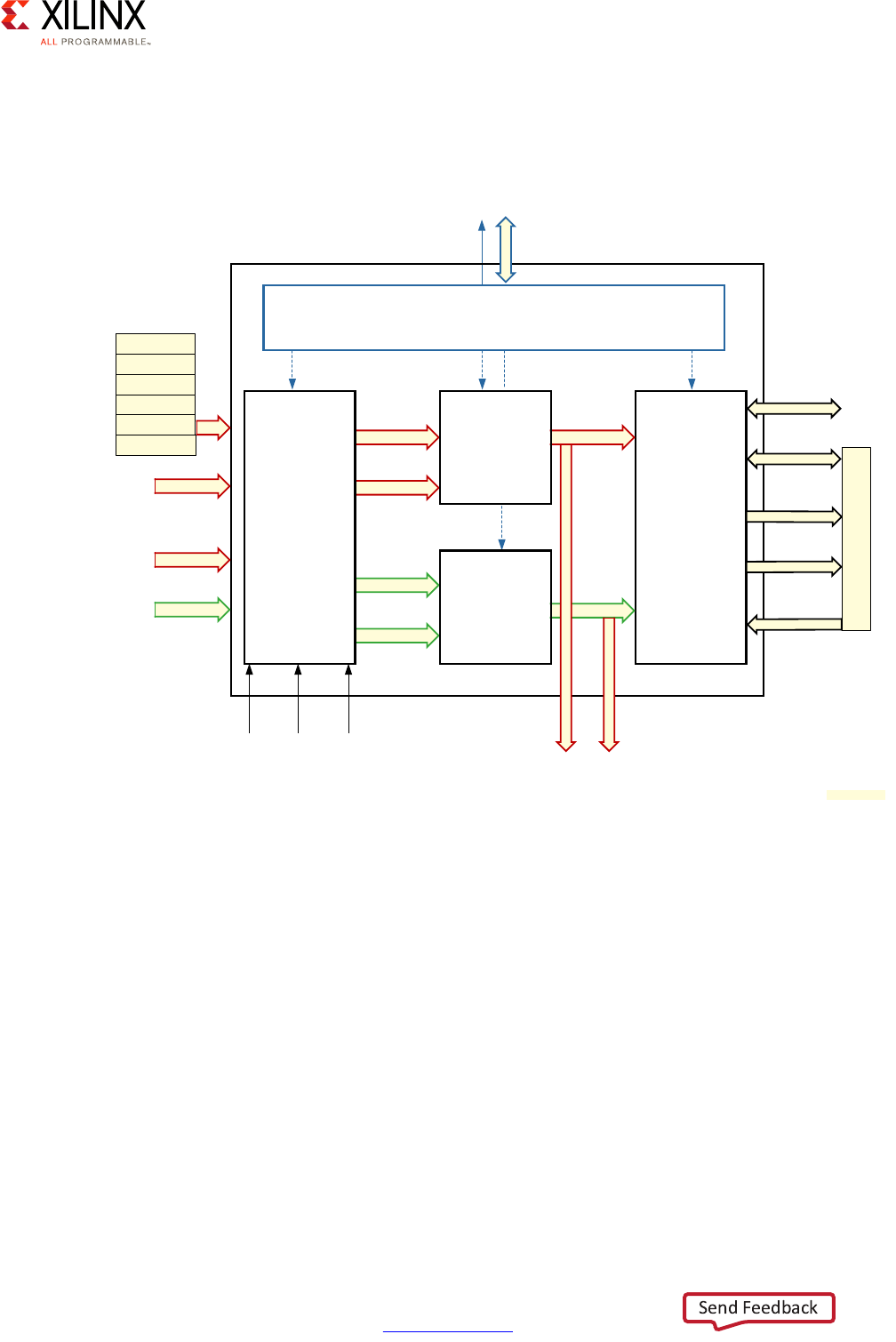

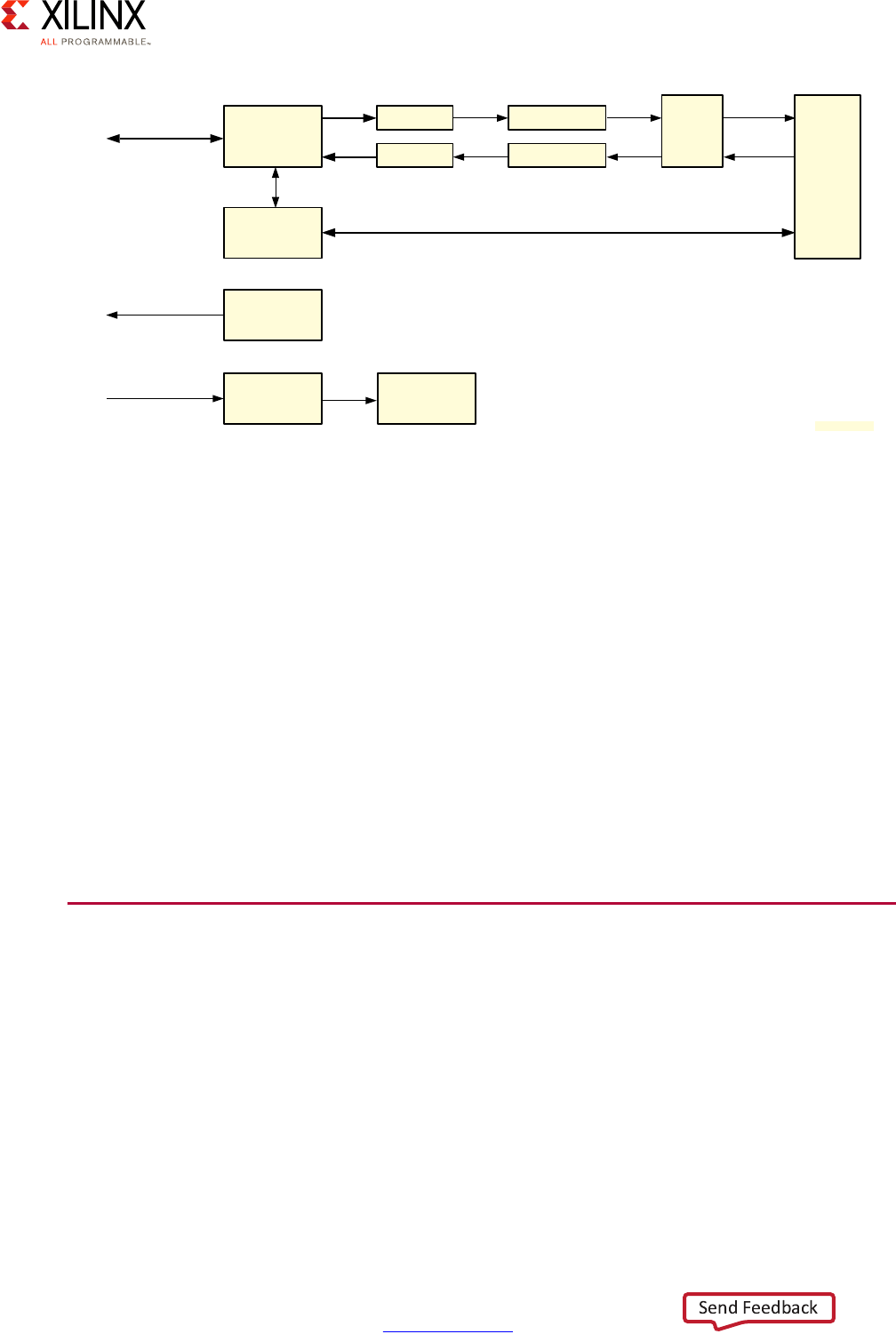

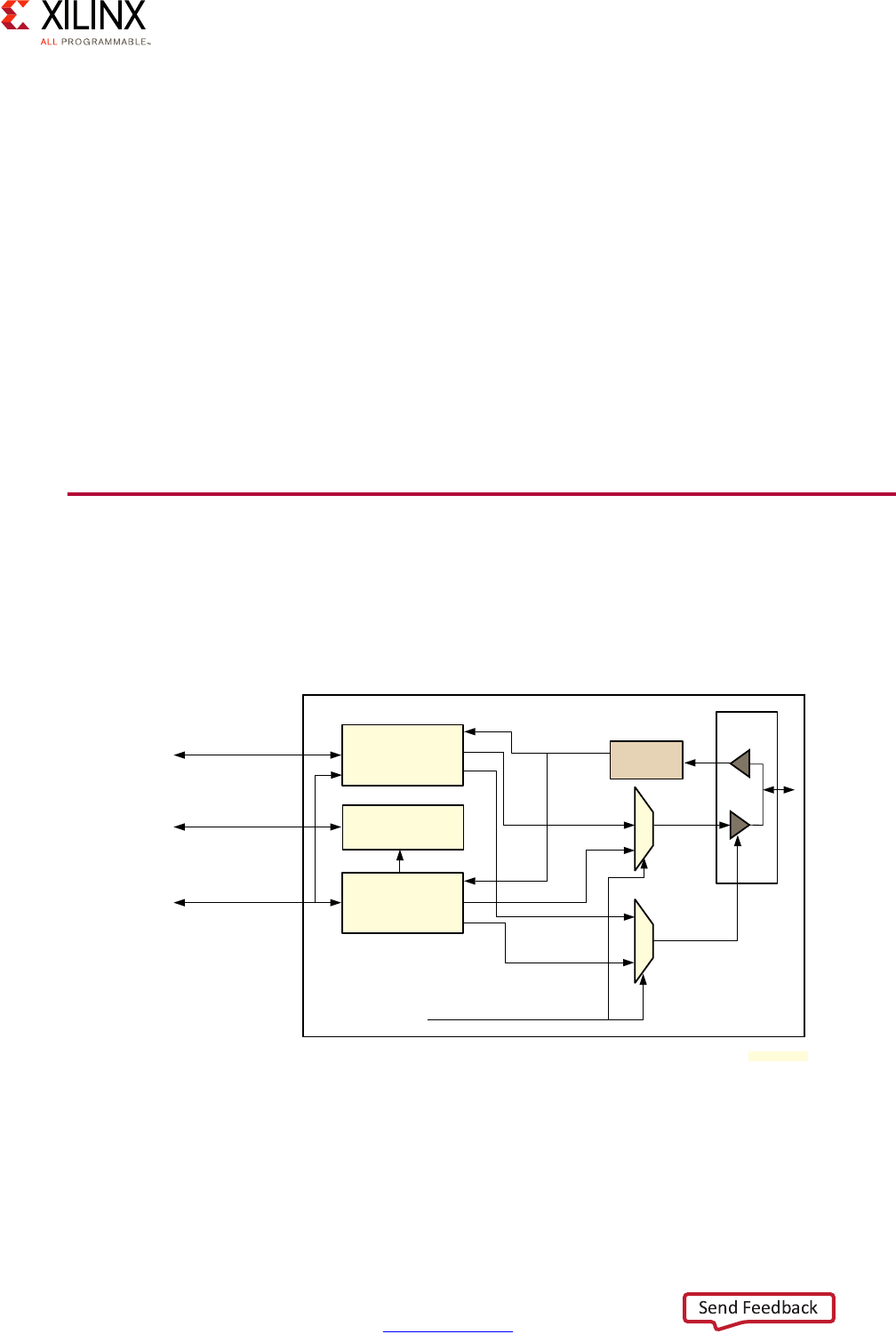

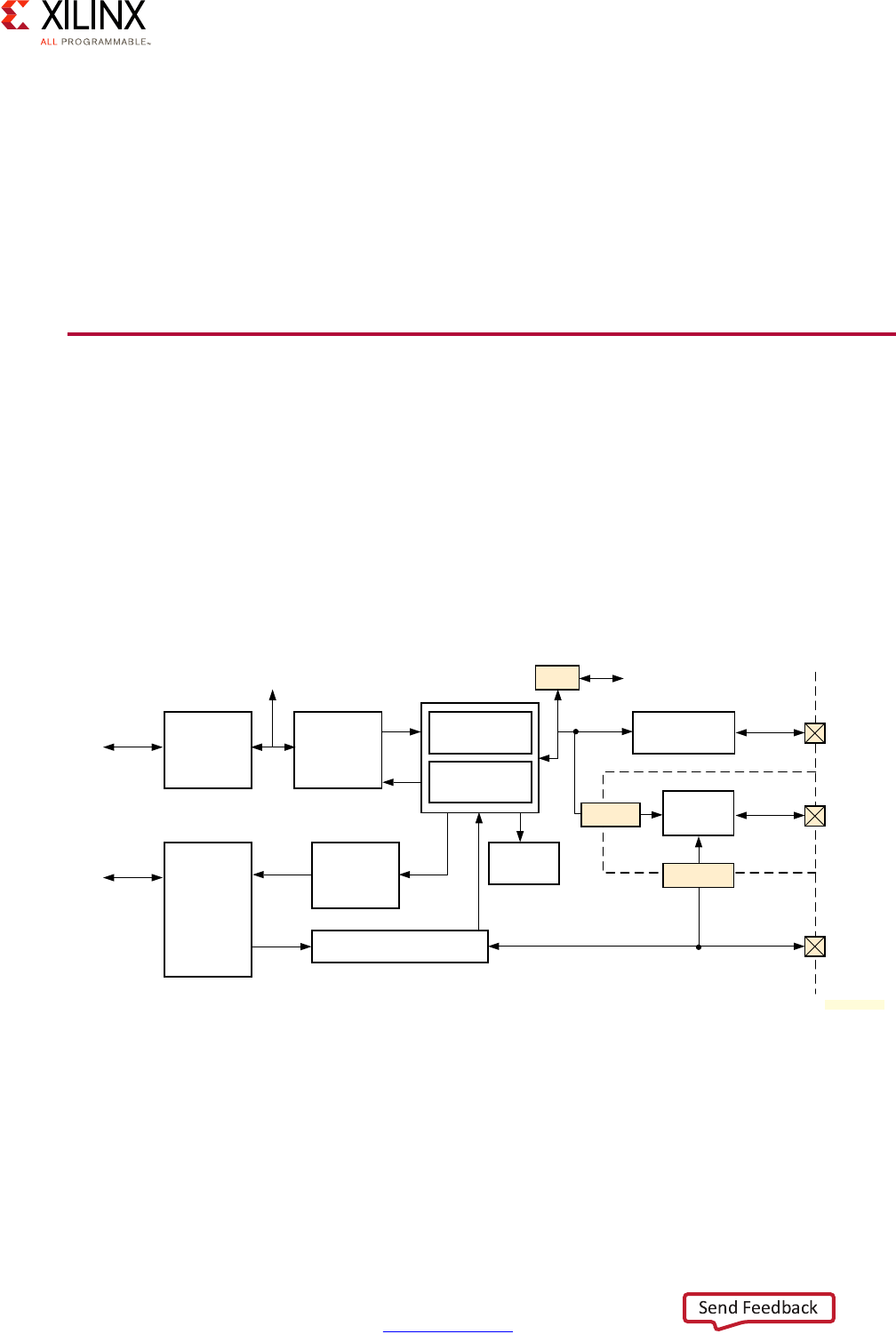

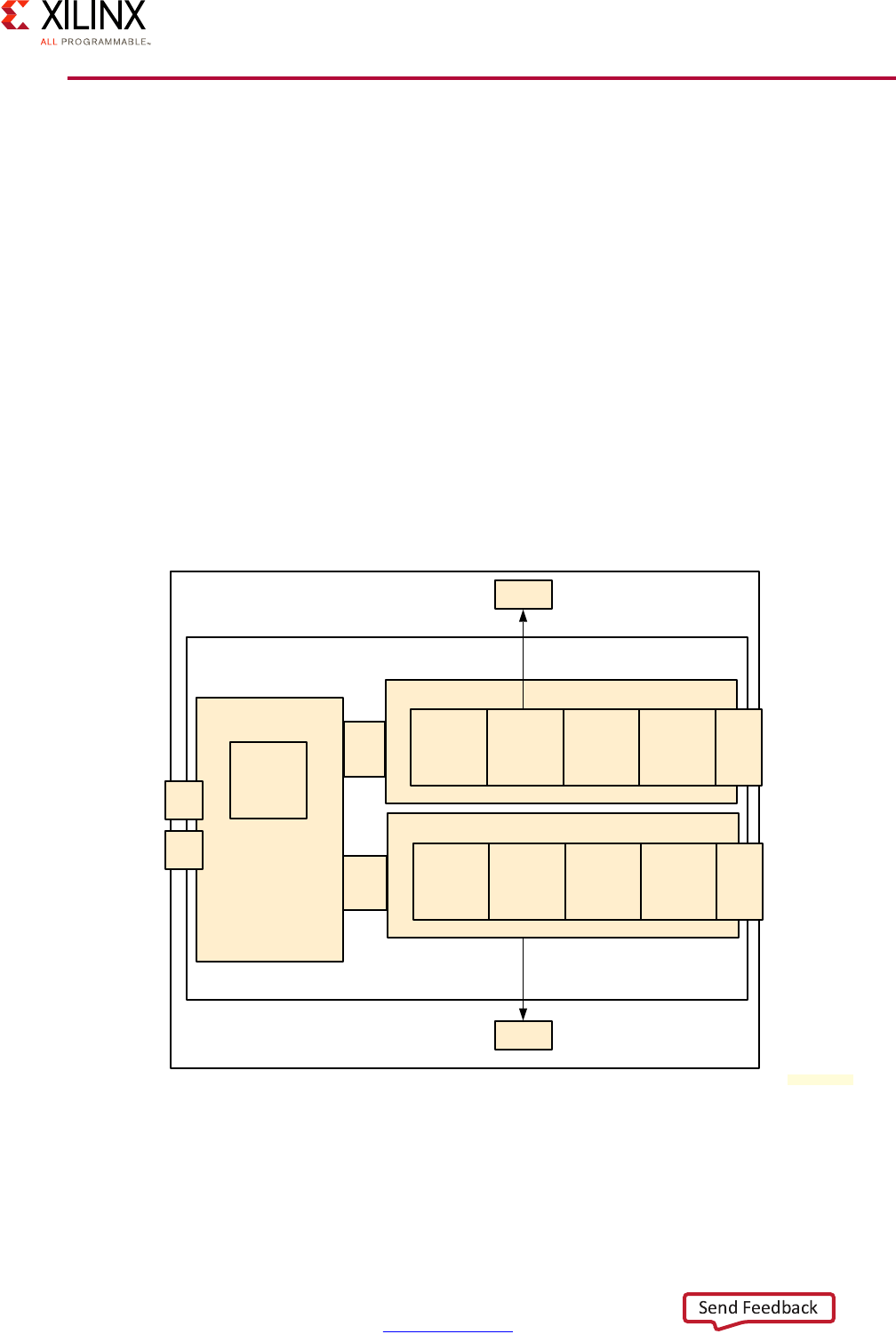

The following diagram provides a simplified view of the Zynq UltraScale+ MPSoC device's

interconnect along with the traffic classes:

The default traffic classes are illustrated as “LL” for Low Latency, “BE” for Best Effort, and “V”

for Video. Many of the blocks in this diagram have already been covered earlier in this

chapter or have been introduced earlier in this section. Note that the TBU and TCU blocks

are part of the SMMU discussed in the previous section. This diagram therefore also shows

the tight relationship between the SMMU and the interconnect.

Apart from the switches, the other key component of the Zynq UltraScale+ MPSoC device's

interconnect is the Cache-Coherent Interconnect (CCI) which ensures that memory

transactions are coherent no matter which parts of the Zynq UltraScale+ MPSoC device are

involved.

X-Ref Target - Figure 2-12

Figure 2-12: Simplified View of Interconnect with Traffic Classes

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 33

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

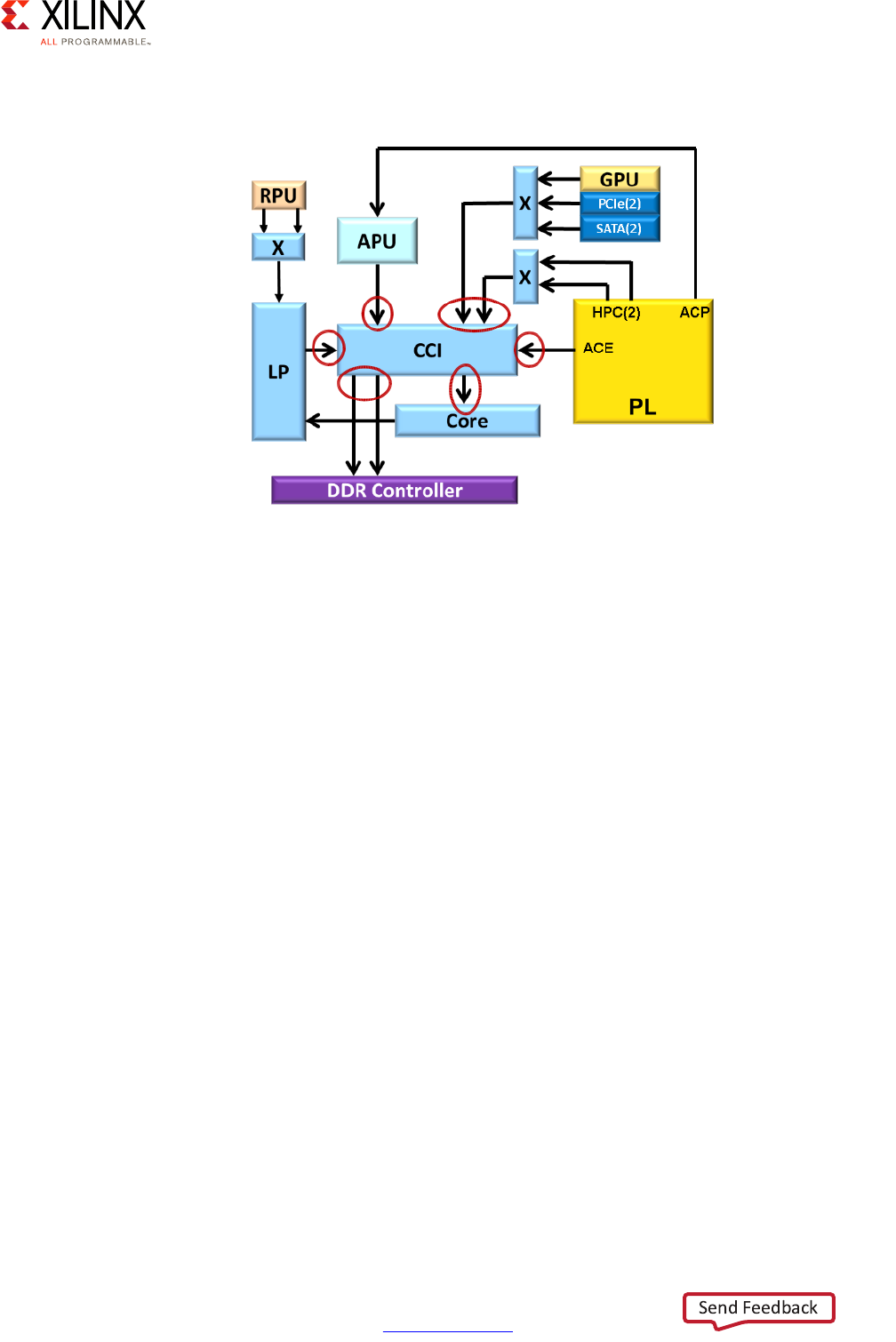

The following diagram highlights the CCI's role:

Detailed View

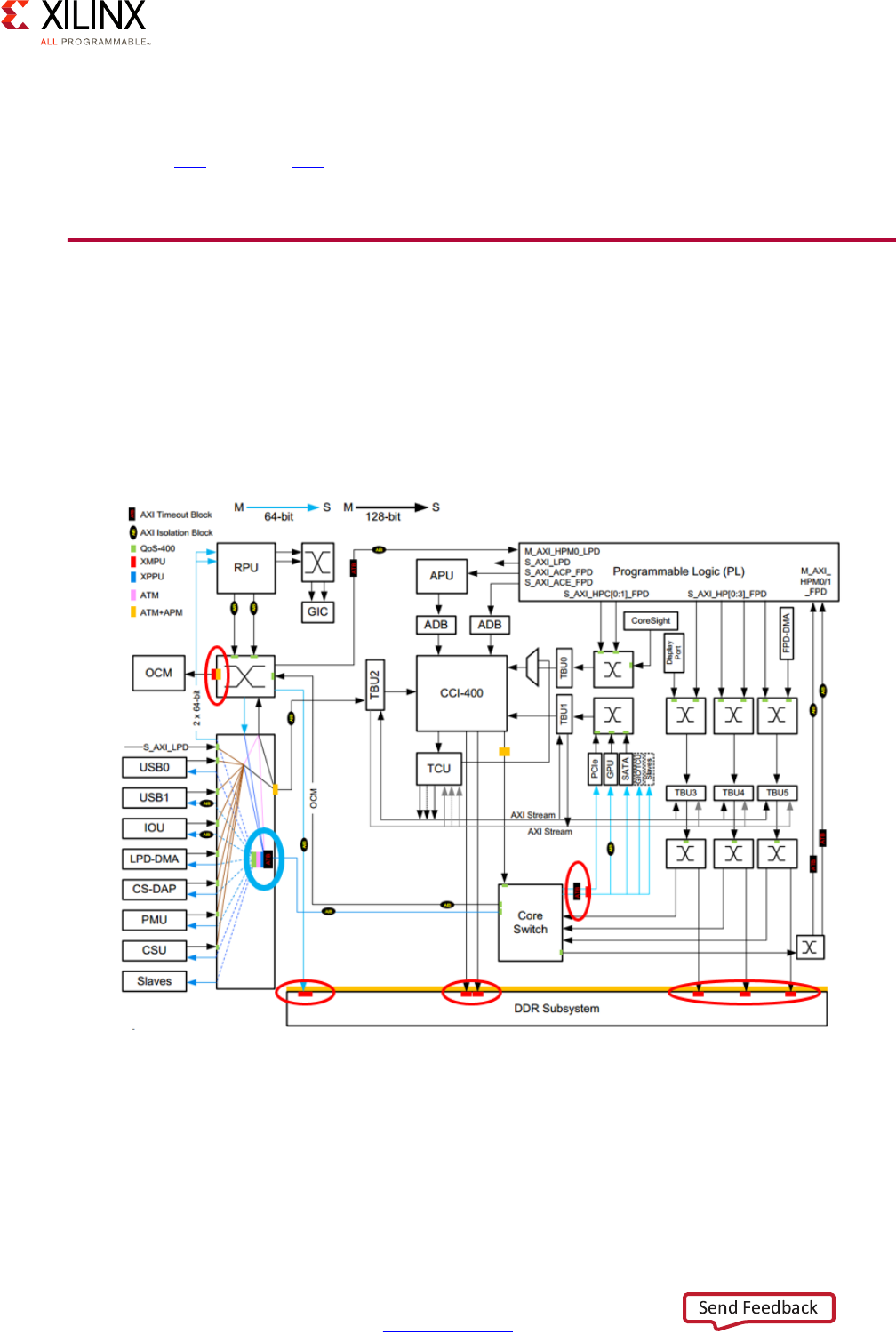

In addition to the components presented earlier in this section, the Zynq UltraScale+

MPSoC device's interconnect also contains a few additional submodules worth knowing

about:

• AXI Timeout Blocks:

Prevents masters from hanging due to unresponsive slaves

• AXI Isolation Blocks (AIBs):

Manages power-down transitions across system blocks

•XMPU/XPPU:

Enforces isolation between master and slave blocks -- discussed in Chapter 7, Resource

Isolation and Partitioning.

• AXI Trace Macrocell (ATMs):

Retrieves AXI traces for CoreSight using the Advanced Trace Bus (ATB)

• AXI Performance Monitor (APMs):

Captures AXI performance metrics

X-Ref Target - Figure 2-13

Figure 2-13: Role of the CCI

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 34

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

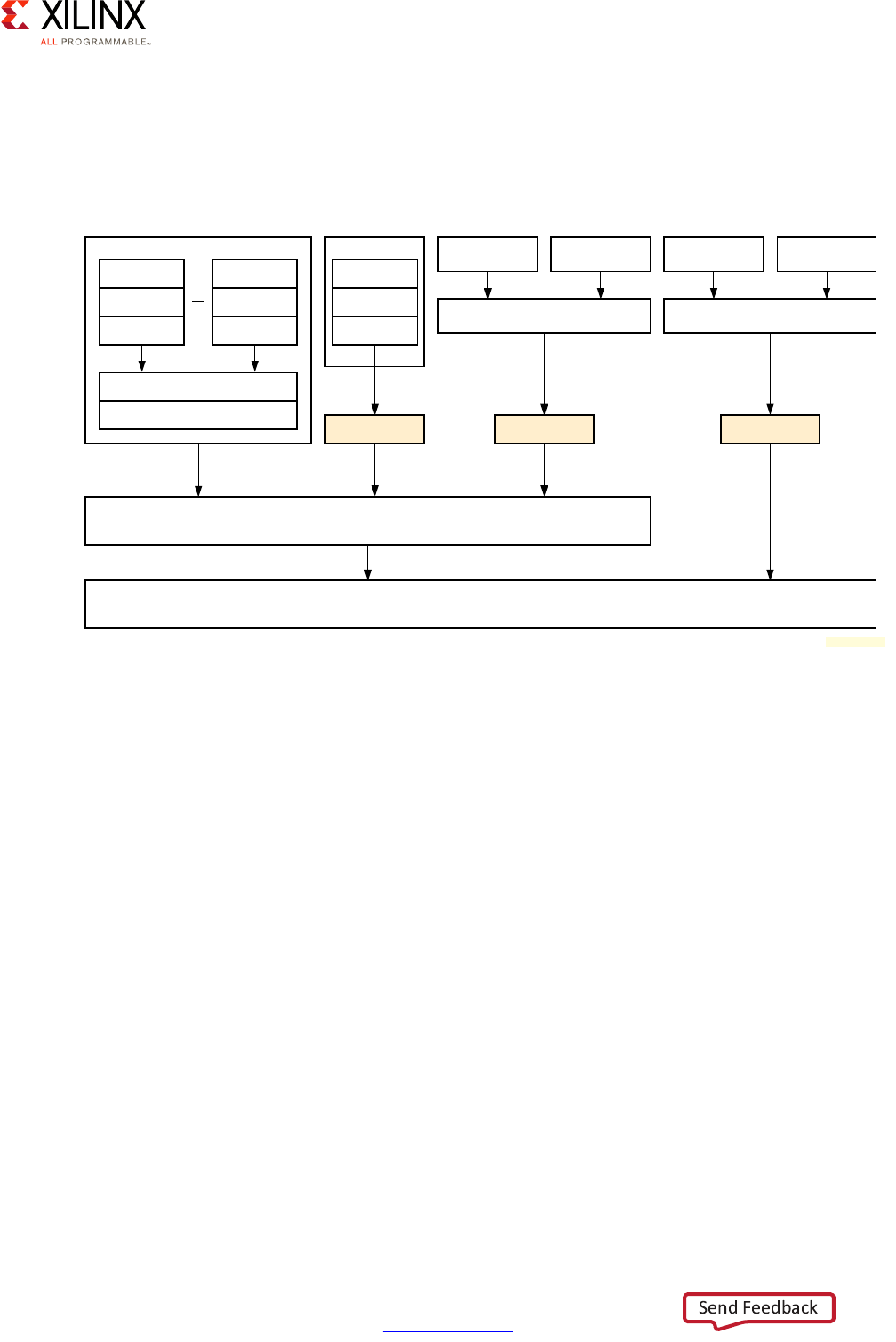

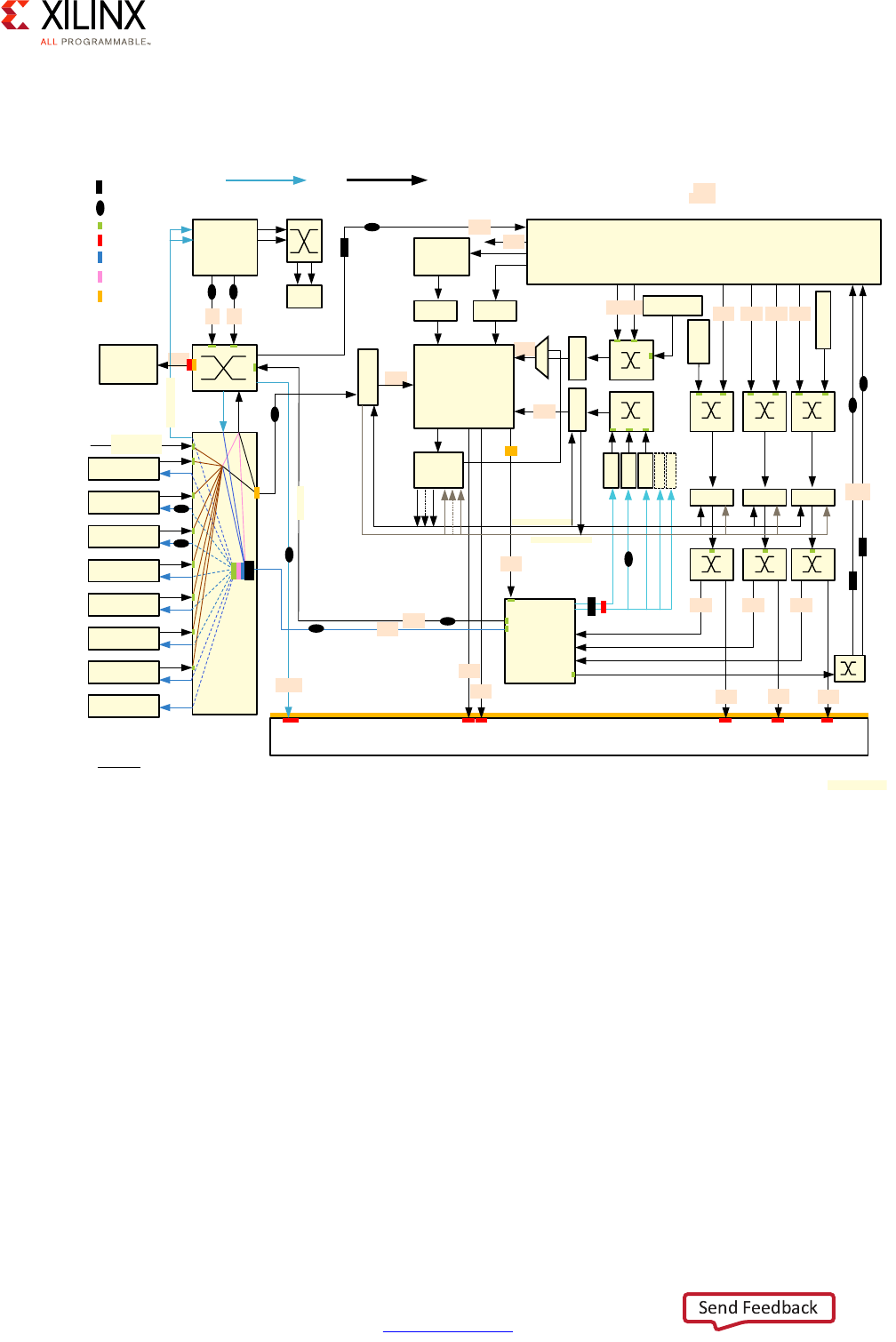

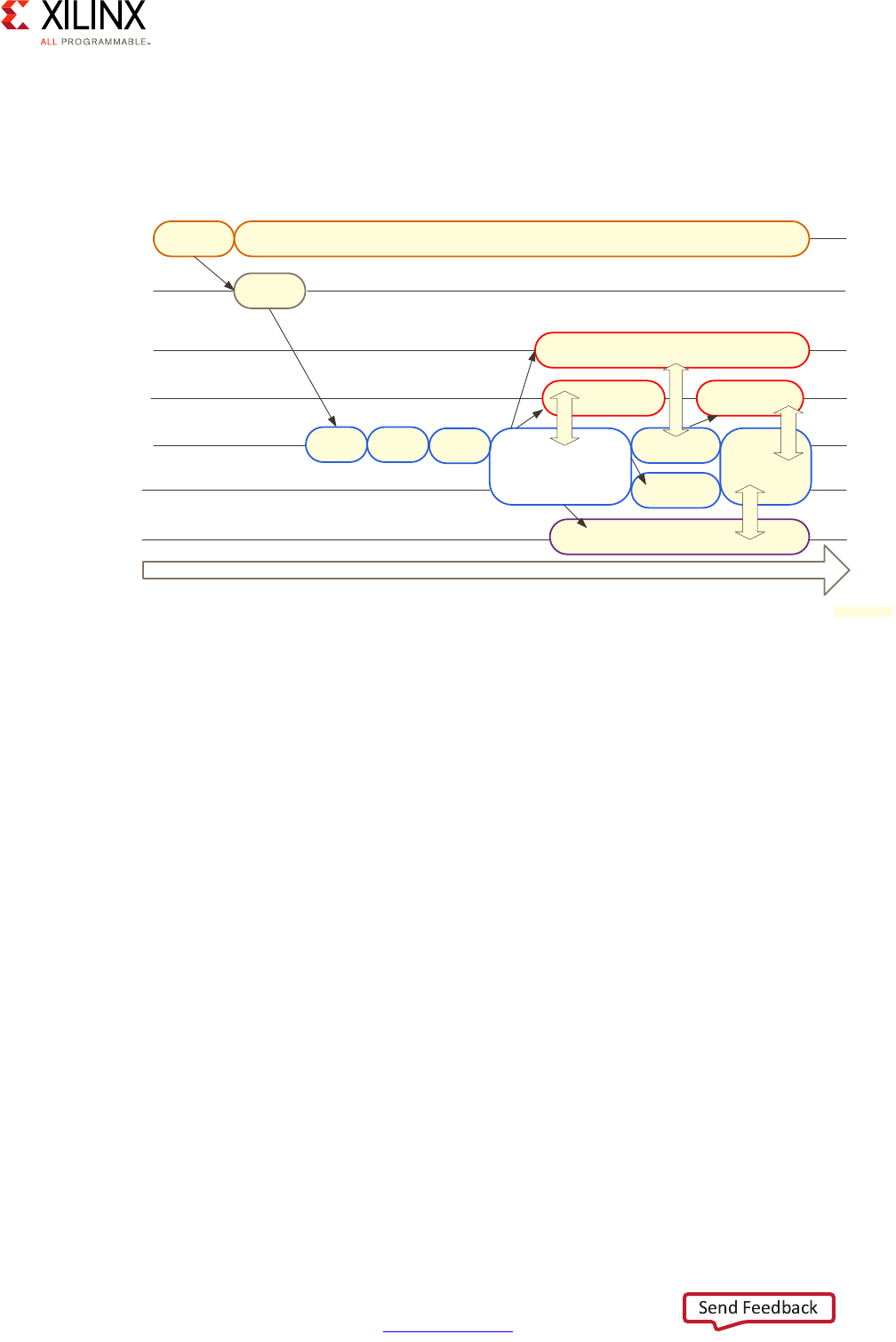

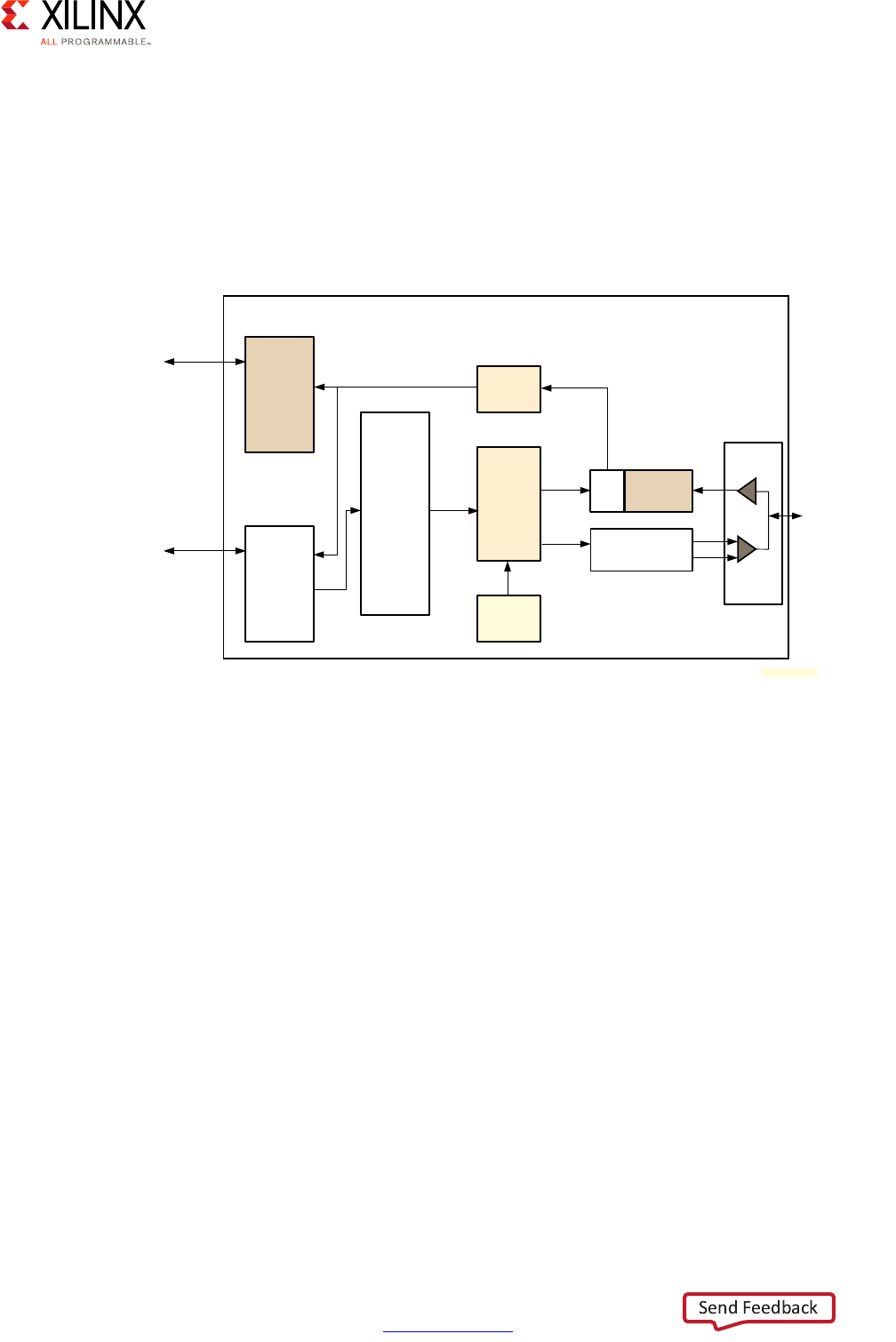

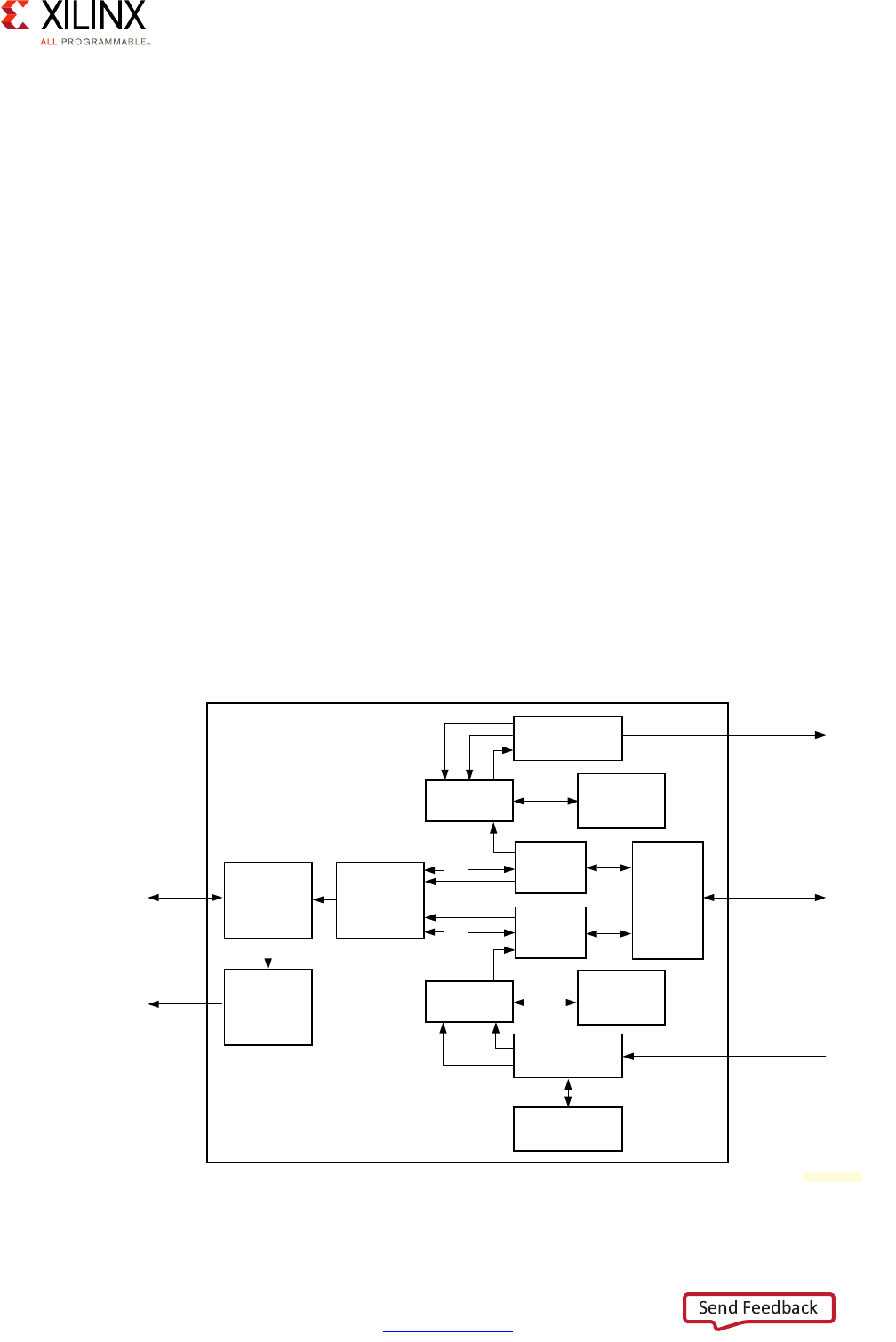

The following diagram captures the detailed view of the Zynq UltraScale+ MPSoC device's

interconnect based on the previous explanations:

Quality of Service (QoS)

Another very important aspect of the interconnect that is illustrated in the previous

diagram is Quality of Service (QoS). There are two parts of the system participating in QoS,

the interconnect switches and the CCI.

Switch-based QoS

If you look closely at the diagram, you will notice that most switches include QoS-400

capabilities. QoS-400 is an ARM addition to the CoreLink NIC-400 standard mentioned

earlier that is used by the Zynq UltraScale+ MPSoC device for its switch interconnect. A

QoS-400 regulator is assigned to most AXI masters in the interconnect.

Note: A QoS-400 “regulator” is the term used in ARM documentation to describe the blocks

associated with AXI masters to control their behavior.

X-Ref Target - Figure 2-14

Figure 2-14: Detailed View of the Zynq UltraScale+ MPSoC Device Interconnect

8

16

16

16

OCM

RPU

USB1

IOU

LPD-DMA

CS-DAP

CSU

Slaves

GIC

APU

ADB ADB

CCI-400

TBU2

TCU

Programmable Logic (PL)

Display

Port

TBU0TBU1

FPD-DMA

PCIe

GPU

SATA

GIC/TCU

Slaves

TBU3 TBU4 TBU5

Core

Switch

DDR Subsystem

2 x 64-bit

S_AXI_LPD

USB0

OCM

AXI Stream

XMPU

XPPU

ATM

PMU

AXI Stream

M_AXI_HPM0_LPD

S_AXI_LPD

S_AXI_ACP_FPD

S_AXI_ACE_FPD

QoS-400

AXI Isolation Block

AXI Timeout Block

ATM+APM

S_AXI_HPC[0:1]_FPD S_AXI_HP[0:3]_FPD

AIB ATB

AIB

AIB

AIB

AIB

ATB

AIB

AIB

AIB

AIB

AIB

ATB

ATB

AIB

M_AXI_

HPM0/1

_FPD

AIB

AIB

ATB

64-bit

MS128-bit

MS

Acronyms

ADB: AMBA Domain Bridge; TCU: Translation Control Unit; TBU: Translation Buffer Unit

16 16 1616

16 16

8

16

8

8

32

32

32

32

32

28

16

ATB

16

16

14,8

8

8

8

8

S3

S2

S1

S0

S4

S3

M0M1

M2

DVM

S5S4S2S1S0

16

The following markings designate the read/write capability of the bus.

Where an example value of 16 designates 16 reads and 16 writes.

Two values (for example 14,8) designates 14 reads and 8 writes. 14,8

X15277-100116

CoreSight

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 35

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

This regulator allows limiting the following for each AXI master:

• Maximum number of outstanding transactions possible at any one time

• Command issue rate

CCI-based QoS

The CCI-400 used in the Zynq UltraScale+ MPSoC device has a QoS Virtual Network (QVN)

feature that is used to avoid the head-of-line blocking (HOLB) effect from occurring during

memory accesses from requests generated by two different traffic priority classes. The

following figure illustrates this scenario:

In this case, one traffic going through the CCI is tagged as Low-Latency while the other is

tagged as Best Effort. HOLB would occur if the lower priority traffic, namely Best Effort,

would "hold the line" (i.e. a DDR port) from the higher priority traffic, namely Low Latency.

The QVN uses different queues and tokens to arbitrate the traffic between the two DDR

ports and avoid the delay caused by HOLB.

This is helpful in the case of the APU as it isn't confined to using a single preassigned DDR

port and is instead switching constantly between the two DDR ports attached to the

CCI-400 to which the APU is itself attached; see the diagram from the previous section.

Since the APU's traffic is generally Low-Latency and most other traffic sharing the CCI with

it is Best Effort, the use of QVN as just explained ensures the APU gets the appropriate QoS

for its memory accesses.

X-Ref Target - Figure 2-15

Figure 2-15: CCI-based QoS

CCI-400

LL Traffic BE Traffic

DDR Controller

Port 1 Port 2

X15889-101816

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 36

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

Customizing QoS

In the vast majority of cases there is no need to modify the QoS setup used by default in the

Zynq UltraScale+ MPSoC device. However, if you are having issues and would like to

possibly tweak some of the QoS-400 regulators, for instance, start by using the built-in data

collection capabilities made possible by the APMs and ATMs mentioned earlier. Xilinx

Software Development Kit (SDK) User Guide: System Performance Analysis (UG1145) [Ref 11]

helps you use the performance monitoring capabilities of the freely-available Xilinx

Software Development Kit (SDK) to model traffic and retrieve live runtime information from

the Zynq UltraScale+ MPSoC device. You can also do the same with the SDSoC

Development Environment. This is a unique feature of Xilinx enabling you not just to

retrieve information from the APU but from the rest of the interconnect connecting all the

Zynq UltraScale+ MPSoC device's internal blocks.

With the data from these tools, and review of the full interconnect diagram shown earlier,

you can identify hot paths in your system and adjust its configuration as necessary. This is

typically done by identifying which traffic from which AXI masters is being negatively

impacted by traffic from lower priority masters. You can then throttle the less important AXI

masters by tweaking their corresponding QoS-400 regulators. If the traffic from the APU

and the RPU is less important than that of the PL, for example, you can configure the APU's

and RPU's regulators to favor traffic from the PL instead. To effectively pull this off, however,

you must make sure that you have a solid understanding of the traffic flow within the Zynq

UltraScale+ MPSoC device.

Only pursue this if the default settings don't work for your design.

PL Interfaces

One aspect of the Zynq UltraScale+ MPSoC device interconnect that is under the designer's

full control are the connections between the PL and the rest of the system. As can be seen

in the main interconnect diagram presented earlier and as will be discussed in Chapter 5,

Programmable Logic, there are several paths from the PL to the interconnect and therefore

the rest of the system. The explanations found in Chapter 5 build on the explanations

provided earlier in this section.

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 37

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

Additional Information

For additional information regarding the interconnect, refer to the corresponding chapter

in Zynq UltraScale+ MPSoC Technical Reference Manual (UG1085) [Ref 7], as well as the

official ARM documentation regarding:

• AMBA 4.0–the core AXI standard

• NIC-400–the switch interconnect

• CCI-400–the cache coherent interconnect

• QoS-400–the QoS addition to NIC-400

Interrupts

With its highly integrated, heterogeneous design the Zynq UltraScale+ MPSoC device

comprises a large number of interrupt sources and several ways of handling them. Most of

the integrated peripherals, for instance, trigger interrupts to notify processors of important

events; this includes Ethernet, USB, GPU, DisplayPort, DMA, UART, SPI, SD, etc. The PL can

also trigger 16 different interrupts.

Additionally, the Zynq UltraScale+ MPSoC device includes configurable Inter-Processor

Interrupts (IPIs) that can be used to enable the independent processing blocks to

communicate with each other.

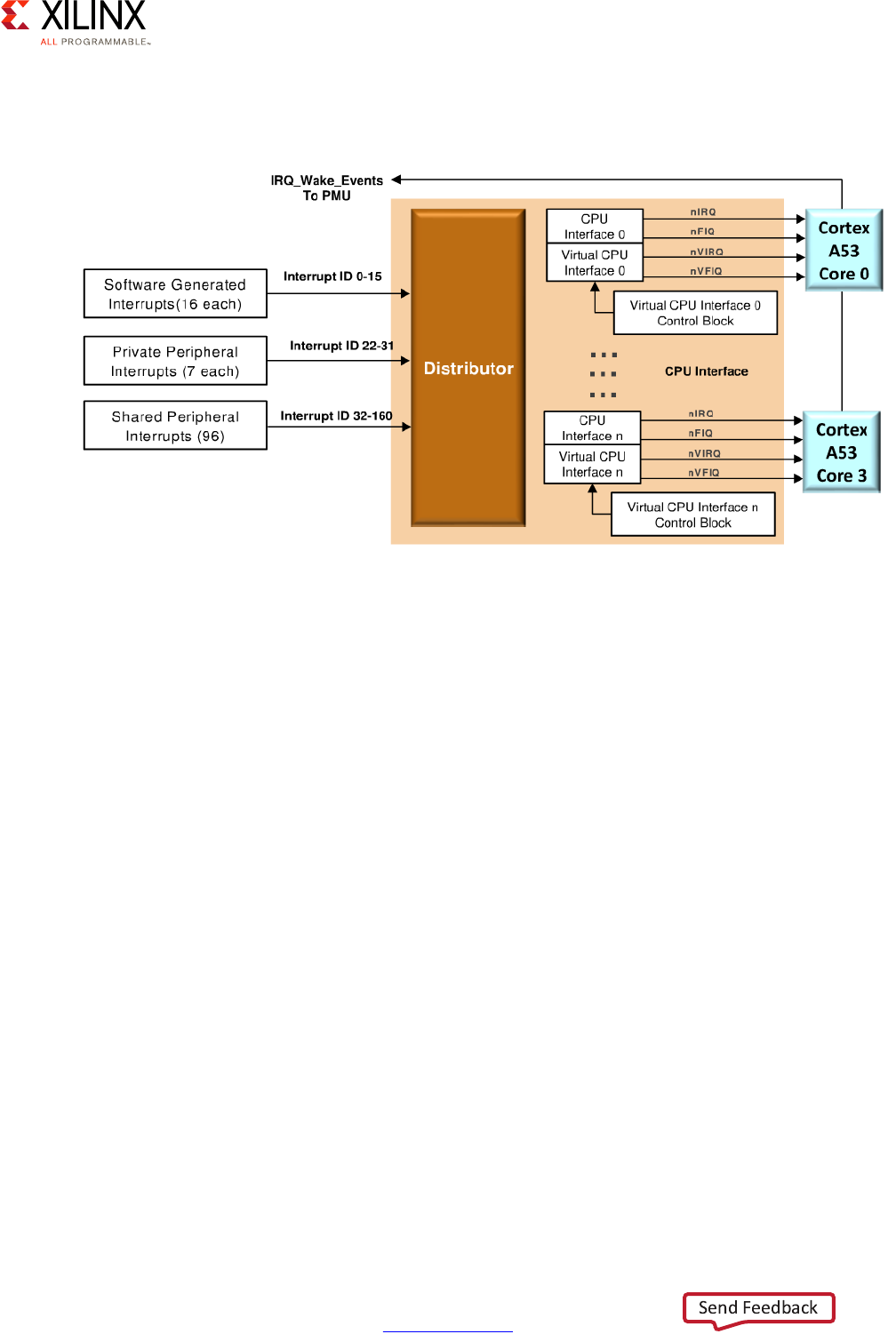

There are two interrupt controllers in on the Zynq UltraScale+ MPSoC device, one for the

APU and one for the RPU. The APU's interrupt controller implements the ARM Global

Interrupt Controller version 2 (GICv2) specification while the RPU's interrupt controller is

based on the ARM GICv1 specification. A key benefit of the former is enabling interrupt

virtualization on the APU.

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 38

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

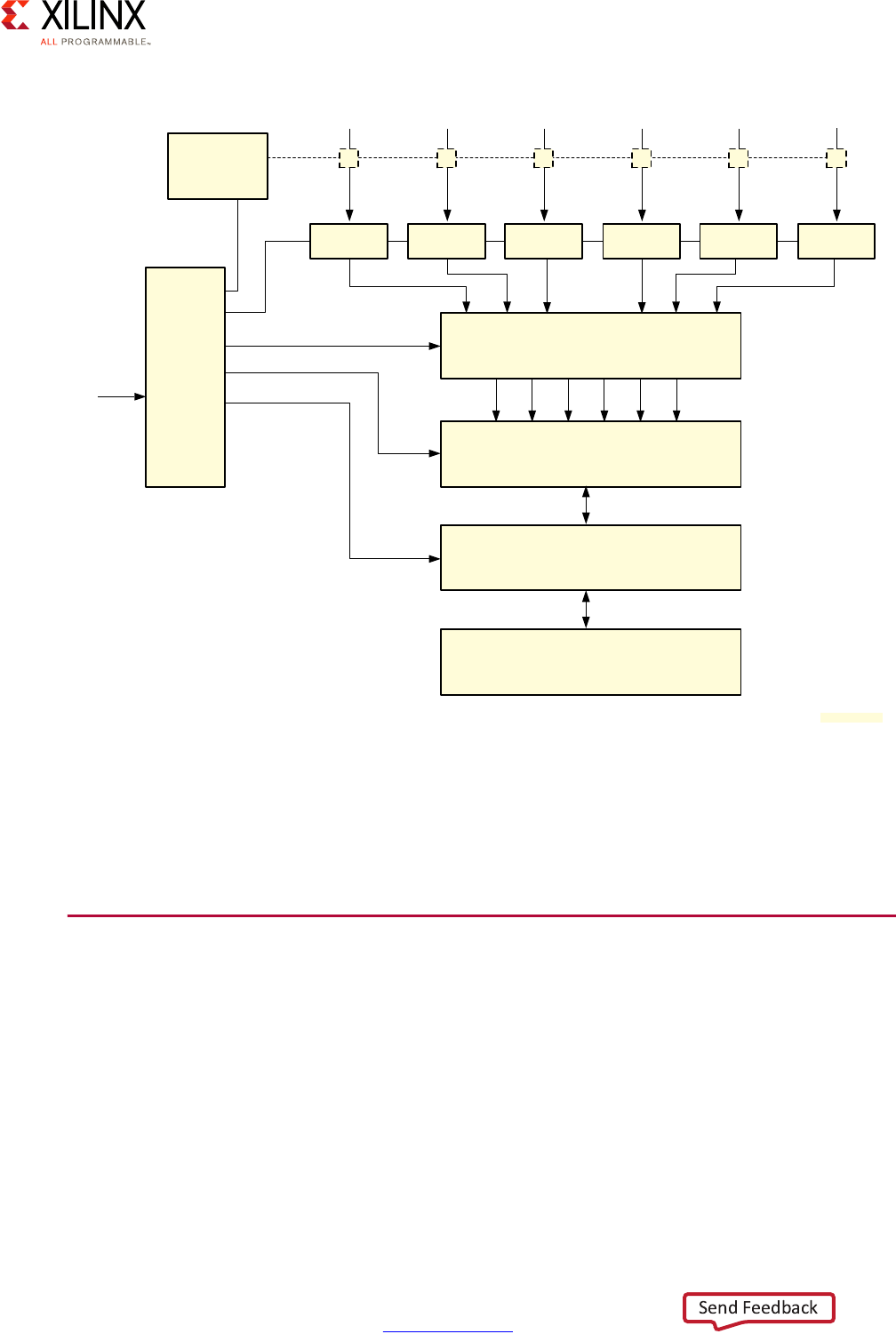

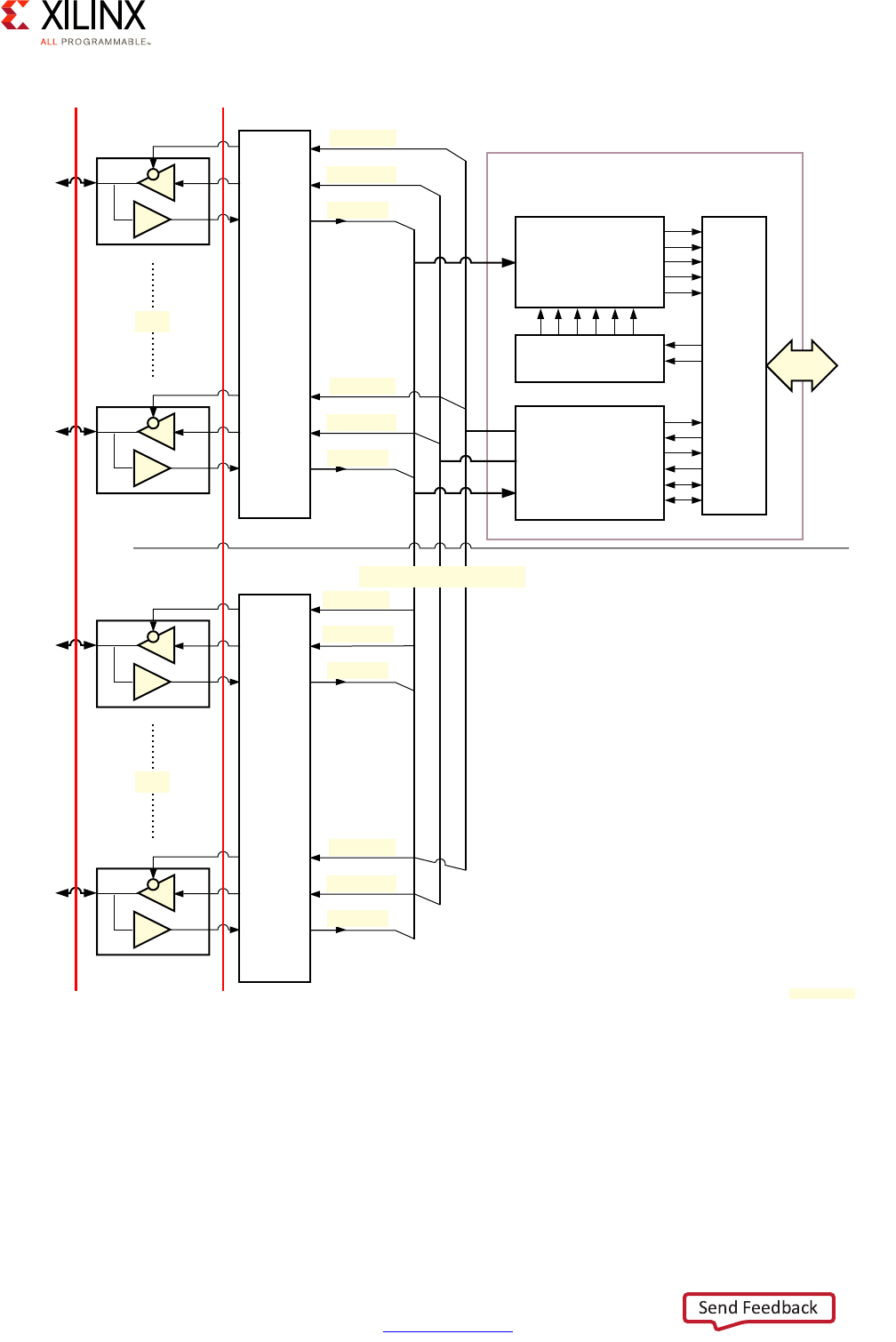

The following diagram illustrates the Zynq UltraScale+ MPSoC device's interrupt routing:

The block marked GIC-400 is the APU's GICv2 interrupt controller while the block marked

GIC (PL390) is the RPU's GICv1 interrupt controller.

APU Interrupt Controller

Each Cortex-A53 processor has four interrupt lines as input:

• nIRQ are normal priority interrupts

• nFIQ are high-priority or Fast Interrupts

• nVIRQ are normal priority virtual interrupts, for virtualization support on the APU

• nVFIQ are high priority virtual interrupts, for virtualization support on the APU

X-Ref Target - Figure 2-16

Figure 2-16: Zynq UltraScale+ MPSoC Device Interrupt Routing

Cortex-A53 MPCore

CPU0 CPUn

CPU0 I/F

VCPU0 I/F CPU0 I/F

VCPUn I/F

Distributor

RPU Cores

CPU0 CPU1

GIC (PL390)

CPU0 I/F CPU1 I/F

Distributor

niRQ0/nFIQ0

nViRQ/nVFIQ

niRQ0/nFIQ0 niRQ1/nFIQ1

Interrupt Source Blocks

(e.g., VCU, GPU,

DisplayPort)

PCIe

Top Level IPI

PCIe Inbound

To Interconnect

To PMU

To PL

To PMU

To PMU

MSI

Legacy IRQ/FiQ per CPU From PL

APU

GIC-400

X15327-092816

Zynq UltraScale+ MPSoC Embedded Design Methodology Guide 39

UG1228 (v1.0) March 31, 2017 www.xilinx.com

Chapter 2: Processing System

The APU's interrupt controller handling of the Cortex-A53 processors' interrupts is

illustrated in the following figure:

The interrupt controller is separated into 2 parts. The distributor is responsible for

registering the inbound interrupts and prioritizing them before distributing them to the

right target CPU. The second part of the interrupt controller interfaces with each CPU's

interrupt lines to trigger the actual interrupt on the relevant Cortex-A53 processor.

The interrupt controller handles 3 types of interrupts:

• 16 Software Generated Interrupts (SGI) for sending interrupts between cores

• 7 Private Peripherals Interrupt (PPI) are targeted to a single Cortex CPU core

• 92 Shared Peripherals interrupts (SPI) shared between all APU and RPU cores

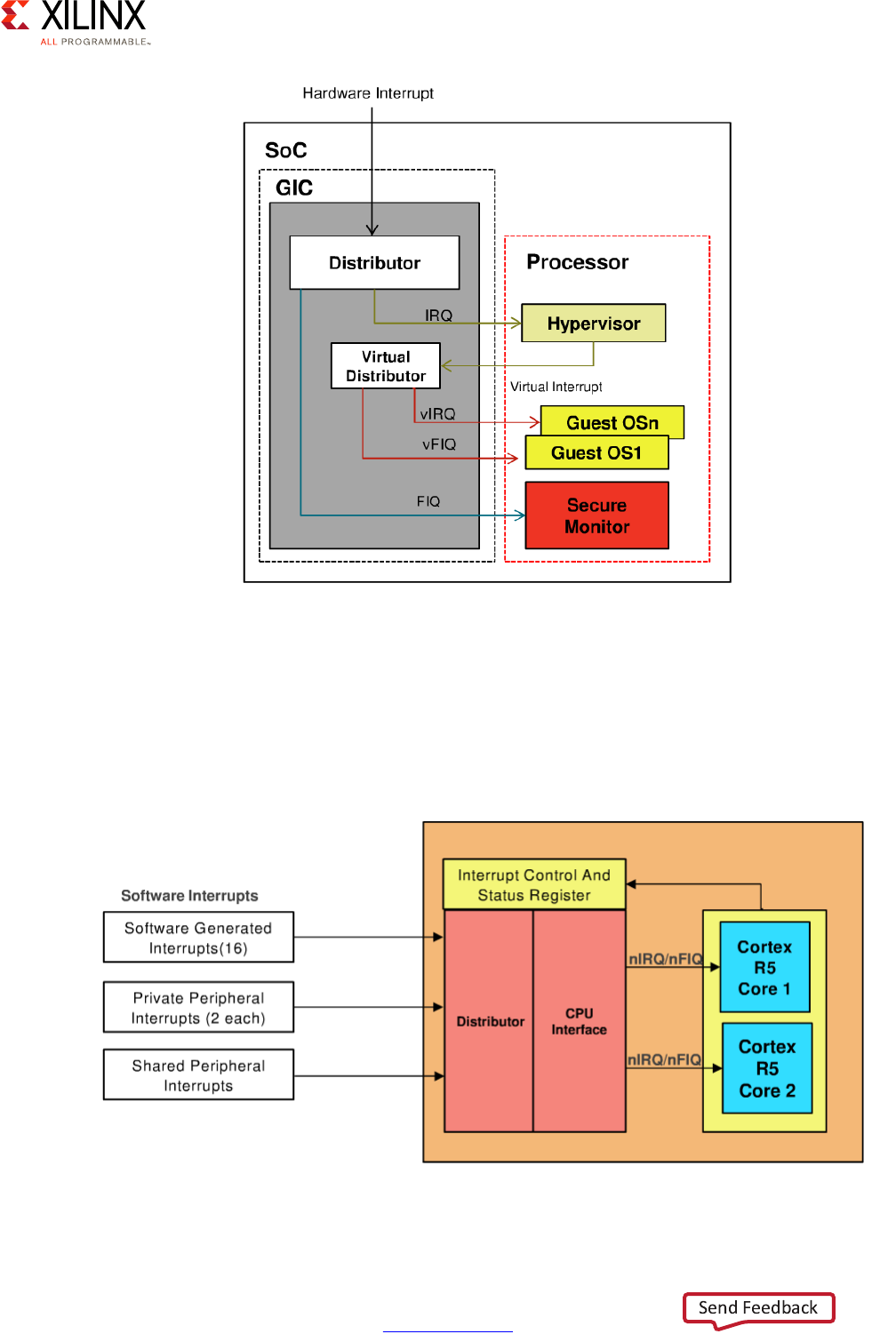

APU Interrupt Virtualization

When an interrupt is received while the APU is running a hypervisor, the hypervisor will

interface with the APU's interrupt controller to generate virtual interrupts for the guest

OSes as illustrated below. Those interrupt will be delivered directly to the guest OS which