Intel XScale Microarchitecture Users Manual User

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 198 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Intel® XScale™ Microarchitecture for the PXA255 Processor

- Introduction 1

- Programming Model 2

- Memory Management 3

- Instruction Cache 4

- Branch Target Buffer 5

- Data Cache 6

- Configuration 7

- 7.1 Overview

- 7.2 CP15 Registers

- 7.2.1 Register 0: ID & Cache Type Registers

- 7.2.2 Register 1: Control & Auxiliary Control Registers

- 7.2.3 Register 2: Translation Table Base Register

- 7.2.4 Register 3: Domain Access Control Register

- 7.2.5 Register 5: Fault Status Register

- 7.2.6 Register 6: Fault Address Register

- 7.2.7 Register 7: Cache Functions

- 7.2.8 Register 8: TLB Operations

- 7.2.9 Register 9: Cache Lock Down

- 7.2.10 Register 10: TLB Lock Down

- 7.2.11 Register 13: Process ID

- 7.2.12 Register 14: Breakpoint Registers

- 7.2.13 Register 15: Coprocessor Access Register

- 7.3 CP14 Registers

- Performance Monitoring 8

- Test 9

- 9.1 Boundary-Scan Architecture and Overview

- 9.2 Reset

- 9.3 Instruction Register

- 9.4 Test Data Registers

- 9.5 TAP Controller

- 9.5.1 Test Logic Reset State

- 9.5.2 Run-Test/Idle State

- 9.5.3 Select-DR-Scan State

- 9.5.4 Capture-DR State

- 9.5.5 Shift-DR State

- 9.5.6 Exit1-DR State

- 9.5.7 Pause-DR State

- 9.5.8 Exit2-DR State

- 9.5.9 Update-DR State

- 9.5.10 Select-IR Scan State

- 9.5.11 Capture-IR State

- 9.5.12 Shift-IR State

- 9.5.13 Exit1-IR State

- 9.5.14 Pause-IR State

- 9.5.15 Exit2-IR State

- 9.5.16 Update-IR State

- Software Debug 10

- 10.1 Introduction

- 10.2 Debug Registers

- 10.3 Debug Control and Status Register (DCSR)

- 10.4 Debug Exceptions

- 10.5 HW Breakpoint Resources

- 10.6 Software Breakpoints

- 10.7 Transmit/Receive Control Register (TXRXCTRL)

- 10.8 Transmit Register (TX)

- 10.9 Receive Register (RX)

- 10.10 Debug JTAG Access

- 10.11 Trace Buffer

- 10.12 Trace Buffer Entries

- 10.13 Downloading Code into the Instruction Cache

- 10.14 Halt Mode Software Protocol

- 10.15 Software Debug Notes

- Performance Considerations 11

- 11.1 Branch Prediction

- 11.2 Instruction Latencies

- 11.2.1 Performance Terms

- 11.2.2 Branch Instruction Timings

- 11.2.3 Data Processing Instruction Timings

- 11.2.4 Multiply Instruction Timings

- 11.2.5 Saturated Arithmetic Instructions

- 11.2.6 Status Register Access Instructions

- 11.2.7 Load/Store Instructions

- 11.2.8 Semaphore Instructions

- 11.2.9 Coprocessor Instructions

- 11.2.10 Miscellaneous Instruction Timing

- 11.2.11 Thumb Instructions

- 11.3 Interrupt Latency

- Optimization Guide A

- A.1 Introduction

- A.2 Intel® XScale™ Core Pipeline

- A.3 Basic Optimizations

- A.4 Cache and Prefetch Optimizations

- A.4.1 Instruction Cache

- A.4.2 Data and Mini Cache

- A.4.3 Cache Considerations

- A.4.4 Prefetch Considerations

- A.4.4.1. Prefetch Distances

- A.4.4.2. Prefetch Loop Scheduling

- A.4.4.3. Compute vs. Data Bus Bound

- A.4.4.4. Low Number of Iterations

- A.4.4.5. Bandwidth Limitations

- A.4.4.6. Cache Memory Considerations

- A.4.4.7. Cache Blocking

- A.4.4.8. Prefetch Unrolling

- A.4.4.9. Pointer Prefetch

- A.4.4.10. Loop Interchange

- A.4.4.11. Loop Fusion

- A.4.4.12. Prefetch to Reduce Register Pressure

- A.5 Instruction Scheduling

- A.5.1 Scheduling Loads

- A.5.2 Scheduling Data Processing Instructions

- A.5.3 Scheduling Multiply Instructions

- A.5.4 Scheduling SWP and SWPB Instructions

- A.5.5 Scheduling the MRA and MAR Instructions (MRRC/MCRR)

- A.5.6 Scheduling the MIA and MIAPH Instructions

- A.5.7 Scheduling MRS and MSR Instructions

- A.5.8 Scheduling Coprocessor Instructions

- A.6 Optimizations for Size

Intel® XScale™ Microarchitecture

for the PXA255 Processor

User’s Manual

March, 2003

Order Number: 278796

ii Intel® XScale™ Microarchitecture User’s Manual

Information in this document is provided in connection with Intel® products. No license, express or implied, by estoppel or otherwise, to any

intellectual property rights is granted by this document. Except as provided in Intel's Terms and Conditions of Sale for such products, Intel assumes no

liability whatsoever, and Intel disclaims any express or implied warranty, relating to sale and/or use of Intel® products including liability or warranties

relating to fitness for a particular purpose, merchantability, or infringement of any patent, copyright or other intellectual property right. Intel products are

not intended for use in medical, life saving, or life sustaining applications. Intel may make changes to specifications and product descriptions at any

time, without notice.Intel may make changes to specifications and product descriptions at any time, without notice.

Designers must not rely on the absence or characteristics of any features or instructions marked “reserved” or “undefined.” Intel reserves these for

future definition and shall have no responsibility whatsoever for conflicts or incompatibilities arising from future changes to them.

The Intel® XScale™ Microarchitecture Users Manual for the PXA255 processor may contain design defects or errors known as errata

which may cause the product to deviate from published specifications. Current characterized errata are available on request.

Contact your local Intel sales office or your distributor to obtain the latest specifications and before placing your product order.

Copies of documents which have an ordering number and are referenced in this document, or other Intel literature may be obtained by calling 1-800-

548-4725 or by visiting Intel's website at http://www.intel.com.

Copyright © Intel Corporation, 2003

* Other names and brands may be claimed as the property of others.

ARM and StrongARM are registered trademarks of ARM, Ltd.

Intel® XScale™ Microarchitecture User’s Manual iii

Contents

Contents

1 Introduction...................................................................................................................................1-1

1.1 About This Document ........................................................................................................1-1

1.1.1 How to Read This Document................................................................................1-1

1.1.2 Other Relevant Documents ..................................................................................1-1

1.2 High-Level Overview of the Intel® XScale™ core as Implemented in the

Application Processors ......................................................................................................1-2

1.2.1 ARM* Compatibility...............................................................................................1-3

1.2.2 Features................................................................................................................1-3

1.2.2.1 Multiply/Accumulate (MAC)...................................................................1-3

1.2.2.2 Memory Management ...........................................................................1-4

1.2.2.3 Instruction Cache ..................................................................................1-4

1.2.2.4 Branch Target Buffer.............................................................................1-4

1.2.2.5 Data Cache ...........................................................................................1-4

1.2.2.6 Fill Buffer & Write Buffer .......................................................................1-5

1.2.2.7 Performance Monitoring........................................................................1-5

1.2.2.8 Power Management..............................................................................1-5

1.2.2.9 Debug ...................................................................................................1-5

1.3 Terminology and Conventions ...........................................................................................1-6

1.3.1 Number Representation........................................................................................1-6

1.3.2 Terminology and Acronyms ..................................................................................1-6

2 Programming Model .....................................................................................................................2-1

2.1 ARM* Architecture Compatibility........................................................................................2-1

2.2 ARM* Architecture Implementation Options ......................................................................2-1

2.2.1 Big Endian versus Little Endian ............................................................................2-1

2.2.2 Thumb...................................................................................................................2-1

2.2.3 ARM* DSP-Enhanced Instruction Set...................................................................2-2

2.2.4 Base Register Update...........................................................................................2-2

2.3 Extensions to ARM* Architecture.......................................................................................2-2

2.3.1 DSP Coprocessor 0 (CP0)....................................................................................2-3

2.3.1.1 Multiply With Internal Accumulate Format ............................................2-3

2.3.1.2 Internal Accumulator Access Format ....................................................2-6

2.3.2 New Page Attributes .............................................................................................2-9

2.3.3 Additions to CP15 Functionality..........................................................................2-10

2.3.4 Event Architecture ..............................................................................................2-11

2.3.4.1 Exception Summary............................................................................2-11

2.3.4.2 Event Priority.......................................................................................2-11

2.3.4.3 Prefetch Aborts ...................................................................................2-12

2.3.4.4 Data Aborts .........................................................................................2-12

2.3.4.5 Events from Preload Instructions ........................................................2-14

2.3.4.6 Debug Events .....................................................................................2-15

3 Memory Management...................................................................................................................3-1

3.1 Overview............................................................................................................................3-1

3.2 Architecture Model.............................................................................................................3-1

3.2.1 Version 4 vs. Version 5.........................................................................................3-2

3.2.2 Instruction Cache..................................................................................................3-2

3.2.3 Data Cache and Write Buffer................................................................................3-2

iv Intel® XScale™ Microarchitecture User’s Manual

Contents

3.2.4 Details on Data Cache and Write Buffer Behavior................................................3-3

3.2.5 Memory Operation Ordering .................................................................................3-3

3.2.6 Exceptions ............................................................................................................3-4

3.3 Interaction of the MMU, Instruction Cache, and Data Cache ............................................3-4

3.4 Control ...............................................................................................................................3-4

3.4.1 Invalidate (Flush) Operation .................................................................................3-4

3.4.2 Enabling/Disabling ................................................................................................3-5

3.4.3 Locking Entries .....................................................................................................3-5

3.4.4 Round-Robin Replacement Algorithm ..................................................................3-7

4 Instruction Cache..........................................................................................................................4-1

4.1 Overview............................................................................................................................4-1

4.2 Operation...........................................................................................................................4-2

4.2.1 Instruction Cache is Enabled ................................................................................4-2

4.2.2 The Instruction Cache Is Disabled........................................................................4-2

4.2.3 Fetch Policy ..........................................................................................................4-2

4.2.4 Round-Robin Replacement Algorithm ..................................................................4-3

4.2.5 Parity Protection ...................................................................................................4-3

4.2.6 Instruction Fetch Latency......................................................................................4-4

4.2.7 Instruction Cache Coherency ...............................................................................4-4

4.3 Instruction Cache Control ..................................................................................................4-5

4.3.1 Instruction Cache State at RESET .......................................................................4-5

4.3.2 Enabling/Disabling ................................................................................................4-5

4.3.3 Invalidating the Instruction Cache.........................................................................4-5

4.3.4 Locking Instructions in the Instruction Cache .......................................................4-6

4.3.5 Unlocking Instructions in the Instruction Cache....................................................4-7

5 Branch Target Buffer ....................................................................................................................5-1

5.1 Branch Target Buffer (BTB) Operation ..............................................................................5-1

5.1.1 Reset ....................................................................................................................5-2

5.1.2 Update Policy........................................................................................................5-2

5.2 BTB Control .......................................................................................................................5-2

5.2.1 Disabling/Enabling ................................................................................................5-2

5.2.2 Invalidation............................................................................................................5-3

6 Data Cache...................................................................................................................................6-1

6.1 Overviews ..........................................................................................................................6-1

6.1.1 Data Cache Overview...........................................................................................6-1

6.1.2 Mini-Data Cache Overview...................................................................................6-2

6.1.3 Write Buffer and Fill Buffer Overview....................................................................6-3

6.2 Data Cache and Mini-Data Cache Operation ....................................................................6-4

6.2.1 Operation When Caching is Enabled....................................................................6-4

6.2.2 Operation When Data Caching is Disabled ..........................................................6-4

6.2.3 Cache Policies ......................................................................................................6-4

6.2.3.1 Cacheability ..........................................................................................6-4

6.2.3.2 Read Miss Policy ..................................................................................6-4

6.2.3.3 Write Miss Policy...................................................................................6-5

6.2.3.4 Write-Back Versus Write-Through ........................................................6-6

6.2.4 Round-Robin Replacement Algorithm ..................................................................6-6

6.2.5 Parity Protection ...................................................................................................6-6

6.2.6 Atomic Accesses ..................................................................................................6-7

Intel® XScale™ Microarchitecture User’s Manual v

Contents

6.3 Data Cache and Mini-Data Cache Control ........................................................................6-7

6.3.1 Data Memory State After Reset............................................................................6-7

6.3.2 Enabling/Disabling ................................................................................................6-7

6.3.3 Invalidate & Clean Operations ..............................................................................6-8

6.3.3.1 Global Clean and Invalidate Operation .................................................6-8

6.4 Re-configuring the Data Cache as Data RAM .................................................................6-10

6.5 Write Buffer/Fill Buffer Operation and Control .................................................................6-13

7 Configuration ................................................................................................................................7-1

7.1 Overview............................................................................................................................7-1

7.2 CP15 Registers..................................................................................................................7-3

7.2.1 Register 0: ID & Cache Type Registers................................................................7-4

7.2.2 Register 1: Control & Auxiliary Control Registers .................................................7-5

7.2.3 Register 2: Translation Table Base Register ........................................................7-7

7.2.4 Register 3: Domain Access Control Register........................................................7-8

7.2.5 Register 5: Fault Status Register..........................................................................7-8

7.2.6 Register 6: Fault Address Register.......................................................................7-9

7.2.7 Register 7: Cache Functions ................................................................................7-9

7.2.8 Register 8: TLB Operations ................................................................................7-10

7.2.9 Register 9: Cache Lock Down ............................................................................7-11

7.2.10 Register 10: TLB Lock Down ..............................................................................7-12

7.2.11 Register 13: Process ID......................................................................................7-12

7.2.11.1 The PID Register Affect On Addresses ..............................................7-13

7.2.12 Register 14: Breakpoint Registers ......................................................................7-13

7.2.13 Register 15: Coprocessor Access Register ........................................................7-14

7.3 CP14 Registers................................................................................................................7-15

7.3.1 Registers 0-3: Performance Monitoring ..............................................................7-16

7.3.2 Registers 6-7: Clock and Power Management ...................................................7-16

7.3.3 Registers 8-15: Software Debug.........................................................................7-17

8 Performance Monitoring ...............................................................................................................8-1

8.1 Overview............................................................................................................................8-1

8.2 Clock Counter (CCNT; CP14 - Register 1) ........................................................................8-1

8.3 Performance Count Registers (PMN0 - PMN1; CP14 - Register 2 and 3, Respectively)..8-2

8.3.1 Extending Count Duration Beyond 32 Bits ...........................................................8-2

8.4 Performance Monitor Control Register (PMNC) ................................................................8-2

8.4.1 Managing the PMNC ............................................................................................8-4

8.5 Performance Monitoring Events ........................................................................................8-4

8.5.1 Instruction Cache Efficiency Mode .......................................................................8-5

8.5.2 Data Cache Efficiency Mode ................................................................................8-6

8.5.3 Instruction Fetch Latency Mode............................................................................8-6

8.5.4 Data/Bus Request Buffer Full Mode .....................................................................8-6

8.5.5 Stall/Writeback Statistics Mode.............................................................................8-7

8.5.6 Instruction TLB Efficiency Mode ...........................................................................8-8

8.5.7 Data TLB Efficiency Mode ....................................................................................8-8

8.6 Multiple Performance Monitoring Run Statistics ................................................................8-8

8.7 Examples ...........................................................................................................................8-8

9 Test...............................................................................................................................................9-1

9.1 Boundary-Scan Architecture and Overview.......................................................................9-1

9.2 Reset .................................................................................................................................9-3

vi Intel® XScale™ Microarchitecture User’s Manual

Contents

9.3 Instruction Register............................................................................................................9-3

9.3.1 Boundary-Scan Instruction Set .............................................................................9-3

9.4 Test Data Registers ...........................................................................................................9-5

9.4.1 Bypass Register....................................................................................................9-5

9.4.2 Boundary-Scan Register.......................................................................................9-6

9.4.3 Device Identification (ID) Code Register...............................................................9-8

9.4.4 Data Specific Registers ........................................................................................9-8

9.5 TAP Controller ...................................................................................................................9-8

9.5.1 Test Logic Reset State .........................................................................................9-9

9.5.2 Run-Test/Idle State.............................................................................................9-10

9.5.3 Select-DR-Scan State.........................................................................................9-10

9.5.4 Capture-DR State ...............................................................................................9-10

9.5.5 Shift-DR State.....................................................................................................9-10

9.5.6 Exit1-DR State ....................................................................................................9-11

9.5.7 Pause-DR State..................................................................................................9-11

9.5.8 Exit2-DR State ....................................................................................................9-11

9.5.9 Update-DR State ................................................................................................9-11

9.5.10 Select-IR Scan State ..........................................................................................9-12

9.5.11 Capture-IR State.................................................................................................9-12

9.5.12 Shift-IR State ......................................................................................................9-12

9.5.13 Exit1-IR State......................................................................................................9-12

9.5.14 Pause-IR State ...................................................................................................9-12

9.5.15 Exit2-IR State......................................................................................................9-13

9.5.16 Update-IR State..................................................................................................9-13

10 Software Debug..........................................................................................................................10-1

10.1 Introduction ......................................................................................................................10-1

10.1.1 Halt Mode ...........................................................................................................10-1

10.1.2 Monitor Mode......................................................................................................10-2

10.2 Debug Registers..............................................................................................................10-2

10.3 Debug Control and Status Register (DCSR) ...................................................................10-3

10.3.1 Global Enable Bit (GE) .......................................................................................10-4

10.3.2 Halt Mode Bit (H) ................................................................................................10-4

10.3.3 Vector Trap Bits (TF,TI,TD,TA,TS,TU,TR) .........................................................10-4

10.3.4 Sticky Abort Bit (SA) ...........................................................................................10-5

10.3.5 Method of Entry Bits (MOE)................................................................................10-5

10.3.6 Trace Buffer Mode Bit (M) ..................................................................................10-5

10.3.7 Trace Buffer Enable Bit (E).................................................................................10-5

10.4 Debug Exceptions............................................................................................................10-5

10.4.1 Halt Mode ...........................................................................................................10-6

10.4.2 Monitor Mode......................................................................................................10-7

10.5 HW Breakpoint Resources ..............................................................................................10-8

10.5.1 Instruction Breakpoints .......................................................................................10-9

10.5.2 Data Breakpoints ................................................................................................10-9

10.6 Software Breakpoints.....................................................................................................10-11

10.7 Transmit/Receive Control Register (TXRXCTRL) .........................................................10-11

10.7.1 RX Register Ready Bit (RR) .............................................................................10-12

10.7.2 Overflow Flag (OV)...........................................................................................10-13

10.7.3 Download Flag (D)............................................................................................10-13

10.7.4 TX Register Ready Bit (TR) ..............................................................................10-14

Intel® XScale™ Microarchitecture User’s Manual vii

Contents

10.7.5 Conditional Execution Using TXRXCTRL.........................................................10-14

10.8 Transmit Register (TX) ..................................................................................................10-15

10.9 Receive Register (RX) ...................................................................................................10-15

10.10 Debug JTAG Access .....................................................................................................10-16

10.10.1 SELDCSR JTAG Command .............................................................................10-16

10.10.2 SELDCSR JTAG Register ................................................................................10-17

10.10.2.1 DBG.HLD_RST.................................................................................10-18

10.10.2.2 DBG.BRK..........................................................................................10-18

10.10.2.3 DBG.DCSR .......................................................................................10-18

10.10.3 DBGTX JTAG Command..................................................................................10-19

10.10.4 DBGTX JTAG Register.....................................................................................10-19

10.10.5 DBGRX JTAG Command .................................................................................10-20

10.10.6 DBGRX JTAG Register ....................................................................................10-20

10.10.6.1 RX Write Logic ..................................................................................10-21

10.10.6.2 DBGRX Data Register ......................................................................10-21

10.10.6.3 DBG.RR ............................................................................................10-22

10.10.6.4 DBG.V...............................................................................................10-22

10.10.6.5 DBG.RX ............................................................................................10-22

10.10.6.6 DBG.D...............................................................................................10-23

10.10.6.7 DBG.FLUSH .....................................................................................10-23

10.10.7 Debug JTAG Data Register Reset Values........................................................10-23

10.11 Trace Buffer ...................................................................................................................10-23

10.11.1 Trace Buffer CP Registers................................................................................10-23

10.11.1.1 Checkpoint Registers........................................................................10-24

10.11.1.2 Trace Buffer Register (TBREG) ........................................................10-25

10.11.2 Trace Buffer Usage...........................................................................................10-25

10.12 Trace Buffer Entries.......................................................................................................10-27

10.12.1 Message Byte ...................................................................................................10-27

10.12.1.1 Exception Message Byte ..................................................................10-28

10.12.1.2 Non-exception Message Byte ...........................................................10-28

10.12.1.3 Address Bytes...................................................................................10-29

10.13 Downloading Code into the Instruction Cache...............................................................10-30

10.13.1 LDIC JTAG Command ......................................................................................10-30

10.13.2 LDIC JTAG Data Register ................................................................................10-31

10.13.3 LDIC Cache Functions......................................................................................10-32

10.13.4 Loading IC During Reset ..................................................................................10-33

10.13.4.1 Loading IC During Cold Reset for Debug .........................................10-34

10.13.4.2 Loading IC During a Warm Reset for Debug ....................................10-36

10.13.5 Dynamically Loading IC After Reset .................................................................10-38

10.13.5.1 Dynamic Code Download Synchronization.......................................10-39

10.13.6 Mini Instruction Cache Overview ......................................................................10-40

10.14 Halt Mode Software Protocol .........................................................................................10-40

10.14.1 Starting a Debug Session .................................................................................10-40

10.14.1.1 Setting up Override Vector Tables....................................................10-41

10.14.1.2 Placing the Handler in Memory.........................................................10-41

10.14.2 Implementing a Debug Handler ........................................................................10-42

10.14.2.1 Debug Handler Entry ........................................................................10-42

10.14.2.2 Debug Handler Restrictions ..............................................................10-42

10.14.2.3 Dynamic Debug Handler...................................................................10-43

10.14.2.4 High-Speed Download ......................................................................10-44

10.14.3 Ending a Debug Session ..................................................................................10-45

viii Intel® XScale™ Microarchitecture User’s Manual

Contents

10.15 Software Debug Notes...................................................................................................10-46

11 Performance Considerations ......................................................................................................11-1

11.1 Branch Prediction ............................................................................................................11-1

11.2 Instruction Latencies........................................................................................................11-2

11.2.1 Performance Terms............................................................................................11-2

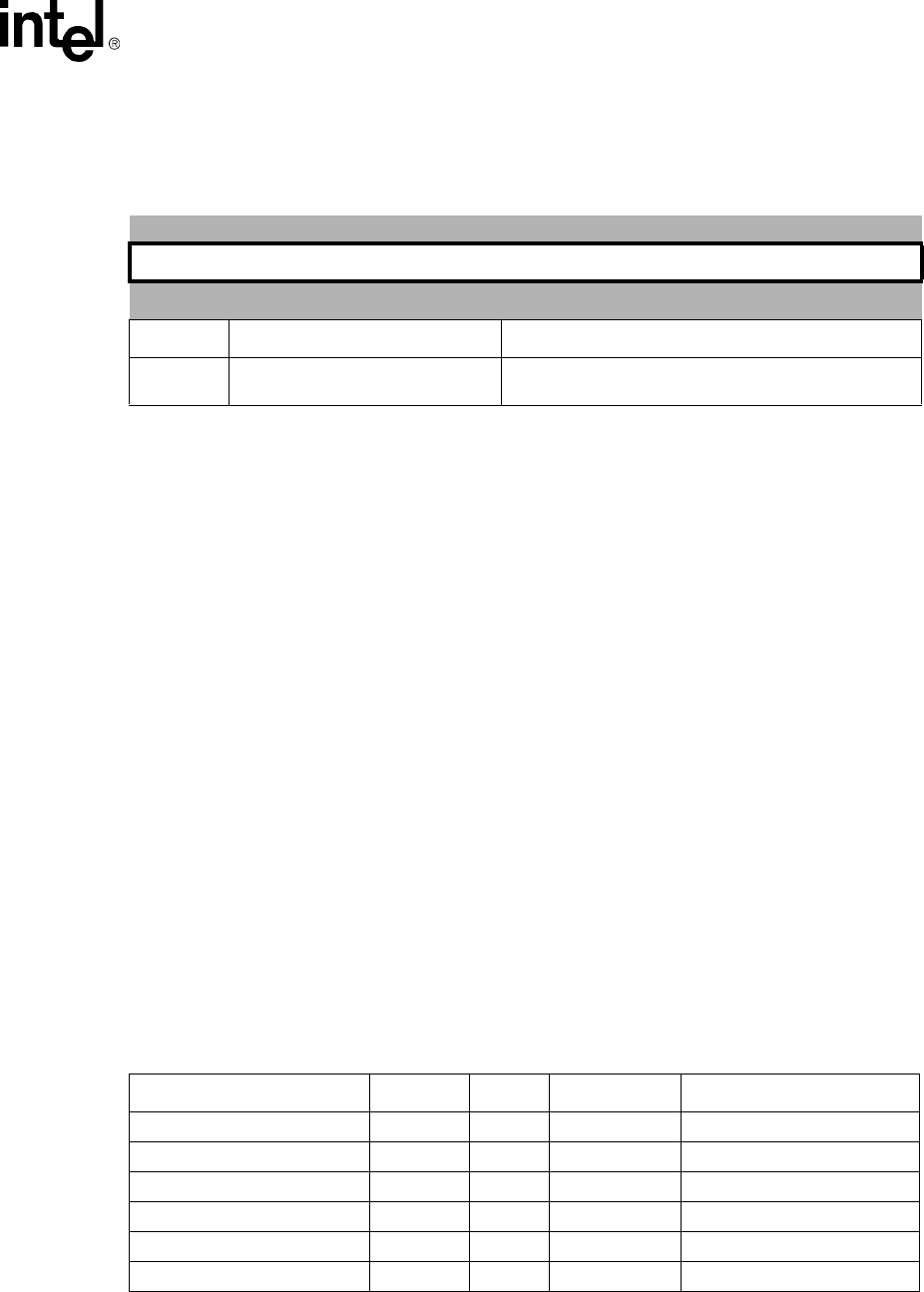

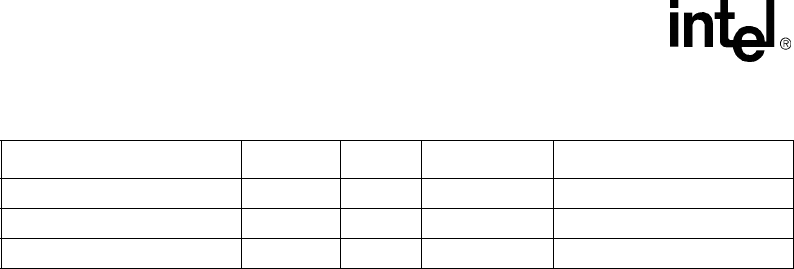

11.2.2 Branch Instruction Timings .................................................................................11-3

11.2.3 Data Processing Instruction Timings ..................................................................11-4

11.2.4 Multiply Instruction Timings ................................................................................11-5

11.2.5 Saturated Arithmetic Instructions........................................................................11-6

11.2.6 Status Register Access Instructions ...................................................................11-7

11.2.7 Load/Store Instructions.......................................................................................11-7

11.2.8 Semaphore Instructions......................................................................................11-8

11.2.9 Coprocessor Instructions ....................................................................................11-8

11.2.10 Miscellaneous Instruction Timing........................................................................11-8

11.2.11 Thumb Instructions .............................................................................................11-9

11.3 Interrupt Latency..............................................................................................................11-9

A Optimization Guide...................................................................................................................... A-1

A.1 Introduction....................................................................................................................... A-1

A.1.1 About This Guide ................................................................................................. A-1

A.2 Intel® XScale™ Core Pipeline.......................................................................................... A-1

A.2.1 General Pipeline Characteristics ......................................................................... A-2

A.2.1.1. Number of Pipeline Stages .................................................................. A-2

A.2.1.2. Intel® XScale™ Core Pipeline Organization ....................................... A-2

A.2.1.3. Out Of Order Completion..................................................................... A-3

A.2.1.4. Register Dependencies........................................................................ A-3

A.2.1.5. Use of Bypassing................................................................................. A-3

A.2.2 Instruction Flow Through the Pipeline ................................................................. A-4

A.2.2.1. ARM* v5 Instruction Execution ............................................................ A-4

A.2.2.2. Pipeline Stalls ...................................................................................... A-4

A.2.3 Main Execution Pipeline ...................................................................................... A-4

A.2.3.1. F1 / F2 (Instruction Fetch) Pipestages................................................. A-4

A.2.3.2. ID (Instruction Decode) Pipestage ....................................................... A-5

A.2.3.3. RF (Register File / Shifter) Pipestage .................................................. A-5

A.2.3.4. X1 (Execute) Pipestages ..................................................................... A-5

A.2.3.5. X2 (Execute 2) Pipestage .................................................................... A-6

A.2.3.6. XWB (write-back)................................................................................. A-6

A.2.4 Memory Pipeline .................................................................................................. A-6

A.2.4.1. D1 and D2 Pipestage........................................................................... A-6

A.2.5 Multiply/Multiply Accumulate (MAC) Pipeline ...................................................... A-6

A.2.5.1. Behavioral Description......................................................................... A-7

A.3 Basic Optimizations ..........................................................................................................A-7

A.3.1 Conditional Instructions ....................................................................................... A-7

A.3.1.1. Optimizing Condition Checks............................................................... A-7

A.3.1.2. Optimizing Branches............................................................................ A-8

A.3.1.3. Optimizing Complex Expressions ...................................................... A-10

A.3.2 Bit Field Manipulation ........................................................................................ A-11

A.3.3 Optimizing the Use of Immediate Values........................................................... A-11

A.3.4 Optimizing Integer Multiply and Divide .............................................................. A-11

A.3.5 Effective Use of Addressing Modes................................................................... A-12

Intel® XScale™ Microarchitecture User’s Manual ix

Contents

A.4 Cache and Prefetch Optimizations ................................................................................. A-12

A.4.1 Instruction Cache............................................................................................... A-13

A.4.1.1. Cache Miss Cost................................................................................ A-13

A.4.1.2. Round Robin Replacement Cache Policy.......................................... A-13

A.4.1.3. Code Placement to Reduce Cache Misses ....................................... A-13

A.4.1.4. Locking Code into the Instruction Cache ........................................... A-13

A.4.2 Data and Mini Cache ......................................................................................... A-14

A.4.2.1. Non Cacheable Regions .................................................................... A-14

A.4.2.2. Write-through and Write-back Cached Memory Regions .................. A-14

A.4.2.3. Read Allocate and Read-write Allocate Memory Regions ................. A-15

A.4.2.4. Creating On-chip RAM....................................................................... A-15

A.4.2.5. Mini-data Cache................................................................................. A-15

A.4.2.6. Data Alignment .................................................................................. A-16

A.4.2.7. Literal Pools ....................................................................................... A-17

A.4.3 Cache Considerations ....................................................................................... A-17

A.4.3.1. Cache Conflicts, Pollution and Pressure............................................ A-17

A.4.3.2. Memory Page Thrashing.................................................................... A-18

A.4.4 Prefetch Considerations .................................................................................... A-18

A.4.4.1. Prefetch Distances............................................................................. A-18

A.4.4.2. Prefetch Loop Scheduling.................................................................. A-18

A.4.4.3. Compute vs. Data Bus Bound............................................................ A-19

A.4.4.4. Low Number of Iterations................................................................... A-19

A.4.4.5. Bandwidth Limitations ........................................................................ A-19

A.4.4.6. Cache Memory Considerations.......................................................... A-20

A.4.4.7. Cache Blocking .................................................................................. A-21

A.4.4.8. Prefetch Unrolling .............................................................................. A-21

A.4.4.9. Pointer Prefetch ................................................................................. A-22

A.4.4.10. Loop Interchange ............................................................................... A-23

A.4.4.11. Loop Fusion ....................................................................................... A-23

A.4.4.12. Prefetch to Reduce Register Pressure .............................................. A-23

A.5 Instruction Scheduling .................................................................................................... A-24

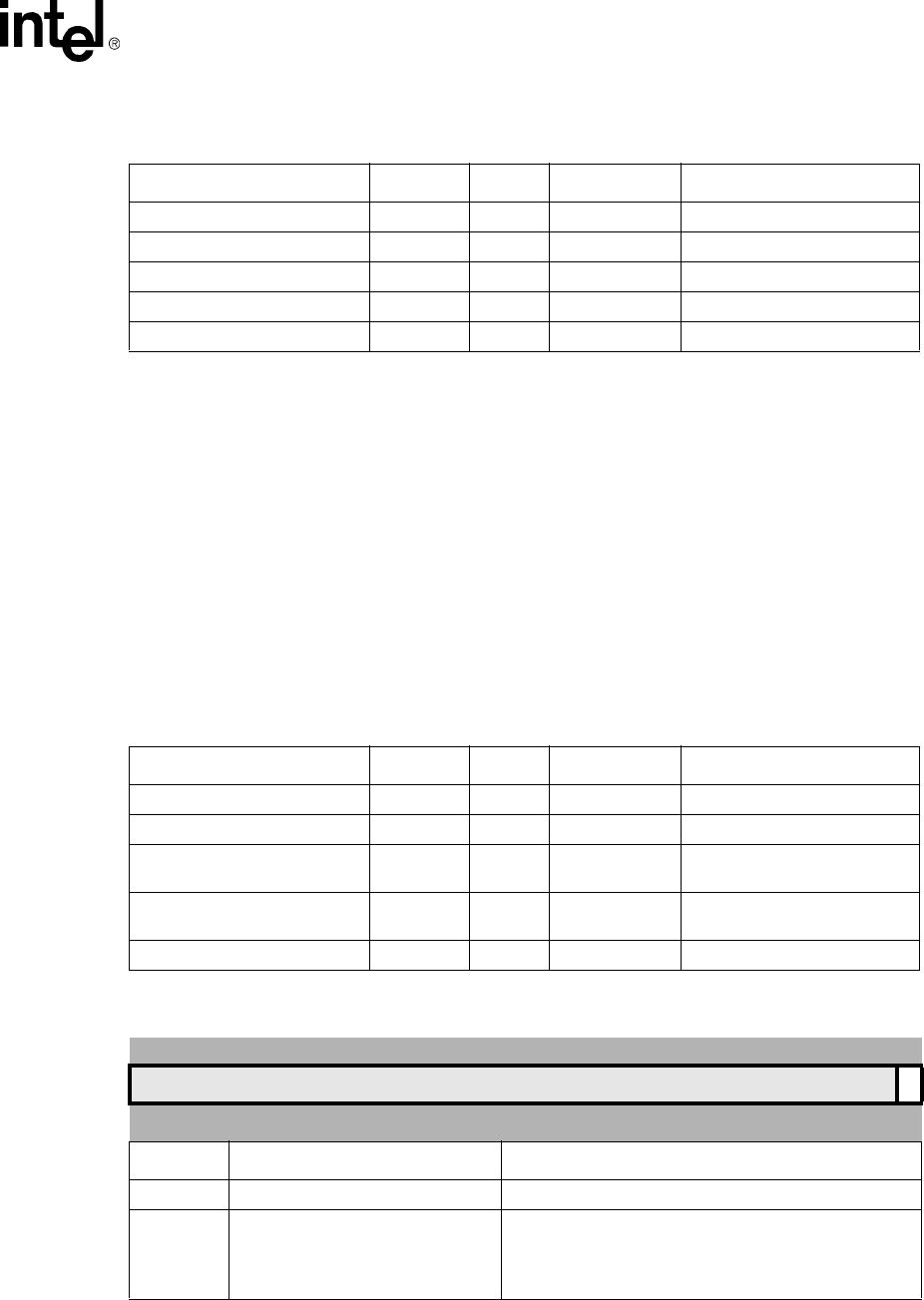

A.5.1 Scheduling Loads .............................................................................................. A-24

A.5.1.1. Scheduling Load and Store Double (LDRD/STRD) ........................... A-26

A.5.1.2. Scheduling Load and Store Multiple (LDM/STM)............................... A-27

A.5.2 Scheduling Data Processing Instructions .......................................................... A-28

A.5.3 Scheduling Multiply Instructions ........................................................................ A-28

A.5.4 Scheduling SWP and SWPB Instructions.......................................................... A-29

A.5.5 Scheduling the MRA and MAR Instructions (MRRC/MCRR)............................. A-29

A.5.6 Scheduling the MIA and MIAPH Instructions..................................................... A-30

A.5.7 Scheduling MRS and MSR Instructions............................................................. A-30

A.5.8 Scheduling Coprocessor Instructions ................................................................ A-31

A.6 Optimizations for Size..................................................................................................... A-31

A.6.1 Multiple Word Load and Store ........................................................................... A-31

A.6.2 Use of Conditional Instructions .......................................................................... A-31

A.6.3 Use of PLD Instructions ..................................................................................... A-32

A.6.4 Thumb Instructions ............................................................................................ A-32

Figures

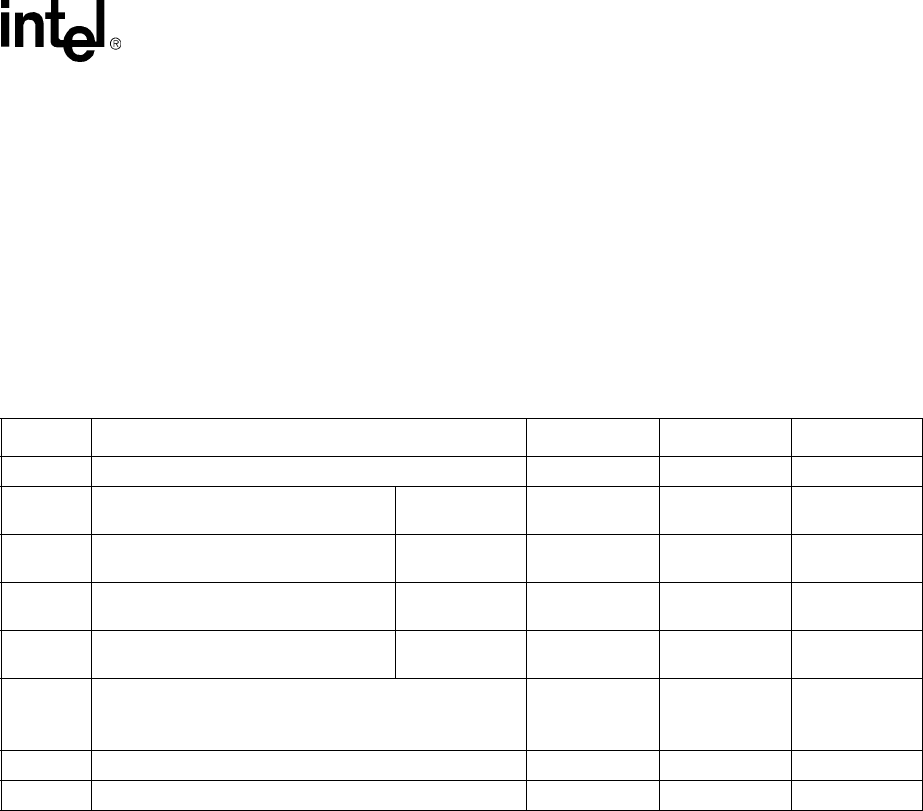

1-1 Intel® XScale™ Microarchitecture Architecture Features .........................................................1-3

3-1 Example of Locked Entries in TLB.............................................................................................3-8

4-1 Instruction Cache Organization .................................................................................................4-1

4-2 Locked Line Effect on Round Robin Replacement ....................................................................4-6

xIntel® XScale™ Microarchitecture User’s Manual

Contents

5-1 BTB Entry ..................................................................................................................................5-1

5-2 Branch History ...........................................................................................................................5-2

6-1 Data Cache Organization ..........................................................................................................6-2

6-2 Mini-Data Cache Organization ..................................................................................................6-3

6-3 Locked Line Effect on Round Robin Replacement..................................................................6-13

9-1 Test Access Port (TAP) Block Diagram.....................................................................................9-2

9-2 BSDL code for 256-MBGA package..........................................................................................9-7

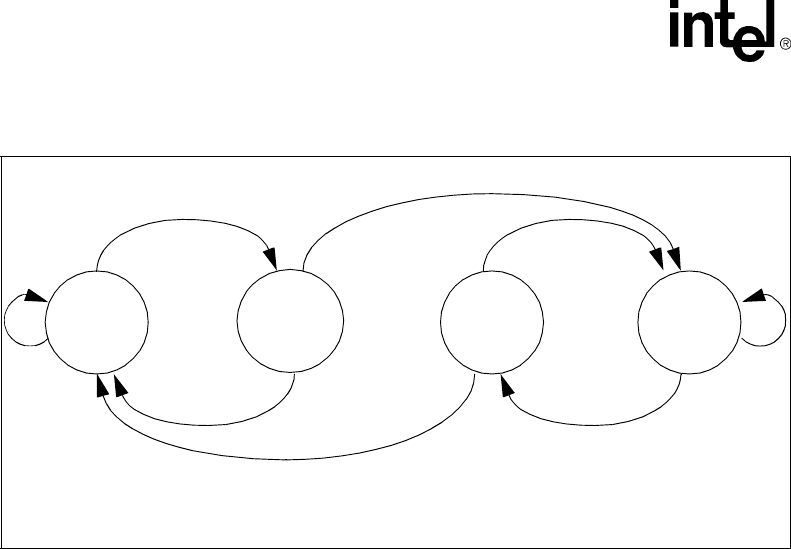

9-3 TAP Controller State Diagram ...................................................................................................9-9

10-1SELDCSR Hardware .............................................................................................................10-17

10-2DBGTX Hardware..................................................................................................................10-19

10-3DBGRX Hardware .................................................................................................................10-20

10-4RX Write Logic.......................................................................................................................10-21

10-5DBGRX Data Register...........................................................................................................10-22

10-6High Level View of Trace Buffer ............................................................................................10-26

10-7Message Byte Formats..........................................................................................................10-27

10-8Indirect Branch Entry Address Byte Organization .................................................................10-30

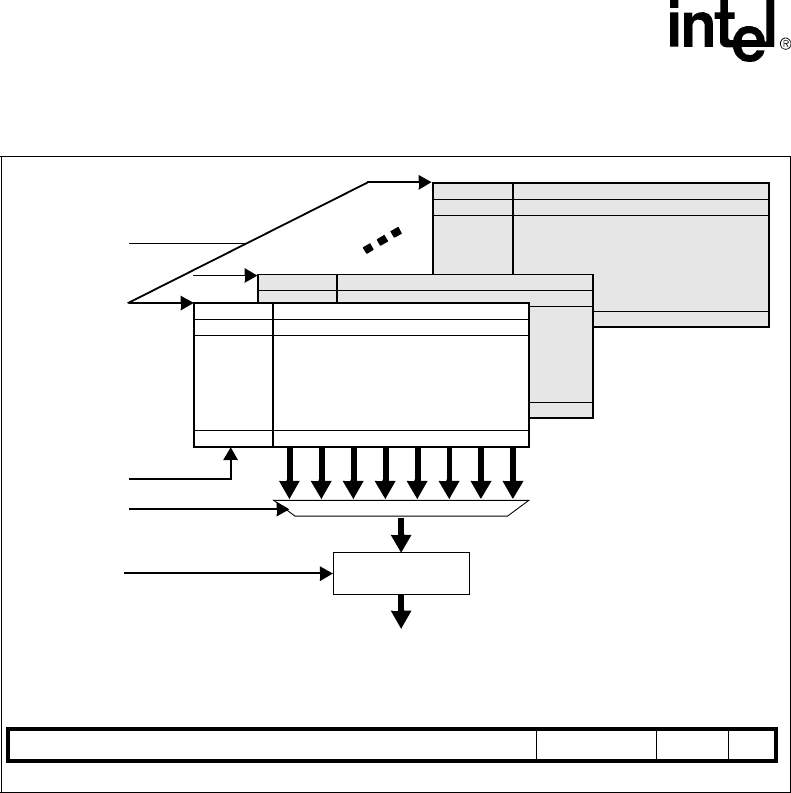

10-9LDIC JTAG Data Register Hardware.....................................................................................10-31

10-10Format of LDIC Cache Functions ........................................................................................10-33

10-11Code Download During a Cold Reset For Debug ................................................................10-35

10-12Code Download During a Warm Reset For Debug..............................................................10-37

10-13Downloading Code in IC During Program Execution ...........................................................10-38

A-1 Intel® XScale™ Core RISC Superpipeline...................................................A-2

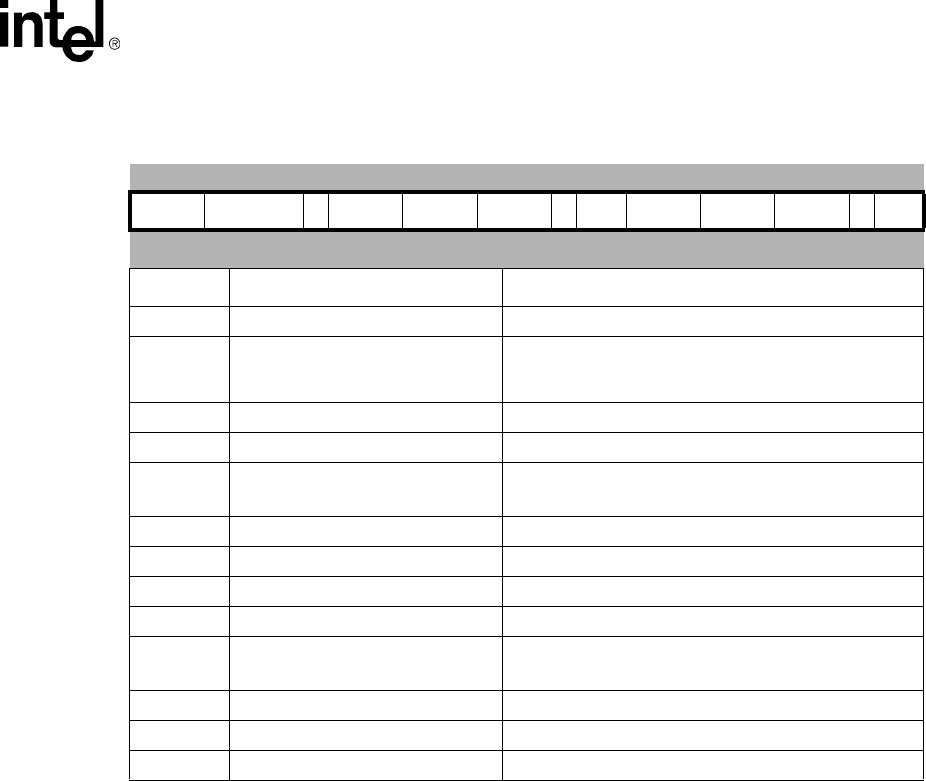

Tables

2-1 Multiply with Internal Accumulate Format..................................................................................2-4

2-2 MIA{<cond>} acc0, Rm, Rs .......................................................................................................2-4

2-3 MIAPH{<cond>} acc0, Rm, Rs ..................................................................................................2-5

2-4 MIAxy{<cond>} acc0, Rm, Rs....................................................................................................2-6

2-5 Internal Accumulator Access Format.........................................................................................2-7

2-6 MAR{<cond>} acc0, RdLo, RdHi ...............................................................................................2-8

2-7 MRA{<cond>} RdLo, RdHi, acc0 ...............................................................................................2-8

2-8 First-level Descriptors................................................................................................................2-9

2-9 Second-level Descriptors for Coarse Page Table .....................................................................2-9

2-10Second-level Descriptors for Fine Page Table ........................................................................2-10

2-11Exception Summary ................................................................................................................2-11

2-12Event Priority ...........................................................................................................................2-11

2-13Intel® XScale™ Core Encoding of Fault Status for Prefetch Aborts .......................................2-12

2-14Intel® XScale™ Core Encoding of Fault Status for Data Aborts .............................................2-13

3-1 Data Cache and Buffer Behavior when X = 0............................................................................3-2

3-2 Data Cache and Buffer Behavior when X = 1............................................................................3-3

3-3 Memory Operations that Impose a Fence .................................................................................3-4

3-4 Valid MMU & Data/mini-data Cache Combinations...................................................................3-4

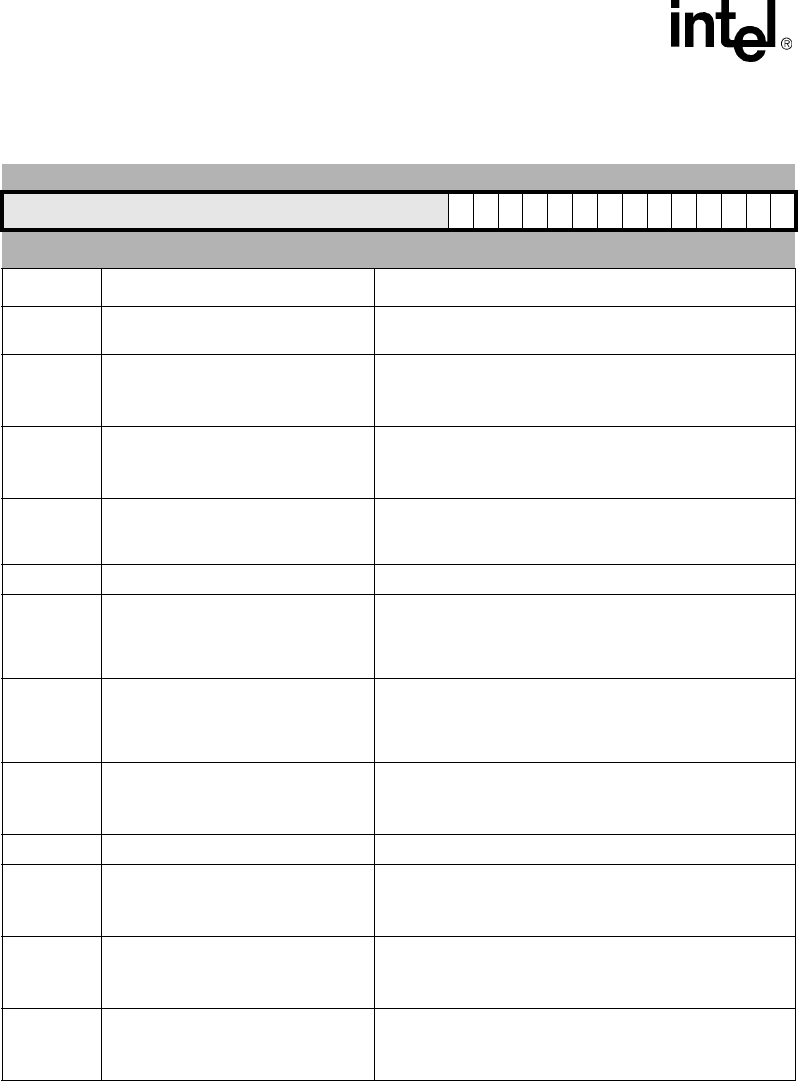

7-1 MRC/MCR Format.....................................................................................................................7-2

7-2 LDC/STC Format when Accessing CP14..................................................................................7-2

7-3 CP15 Registers .........................................................................................................................7-3

7-4 ID Register.................................................................................................................................7-4

7-5 Cache Type Register.................................................................................................................7-5

7-6 ARM* Control Register ..............................................................................................................7-6

7-7 Auxiliary Control Register ..........................................................................................................7-7

Intel® XScale™ Microarchitecture User’s Manual xi

Contents

7-8 Translation Table Base Register ...............................................................................................7-7

7-9 Domain Access Control Register...............................................................................................7-8

7-10Fault Status Register .................................................................................................................7-8

7-11Fault Address Register ..............................................................................................................7-9

7-12Cache Functions........................................................................................................................7-9

7-13TLB Functions..........................................................................................................................7-11

7-14Cache Lockdown Functions.....................................................................................................7-11

7-15Data Cache Lock Register.......................................................................................................7-11

7-16TLB Lockdown Functions ........................................................................................................7-12

7-17Accessing Process ID..............................................................................................................7-12

7-18Process ID Register.................................................................................................................7-13

7-19Accessing the Debug Registers...............................................................................................7-13

7-20Coprocessor Access Register .................................................................................................7-14

7-21CP14 Registers........................................................................................................................7-16

7-22Accessing the Performance Monitoring Registers...................................................................7-16

7-23PWRMODE Register 7 ............................................................................................................7-17

7-24CCLKCFG Register 6 ..............................................................................................................7-17

7-25Clock and Power Management valid operations .....................................................................7-17

7-26Accessing the Debug Registers...............................................................................................7-18

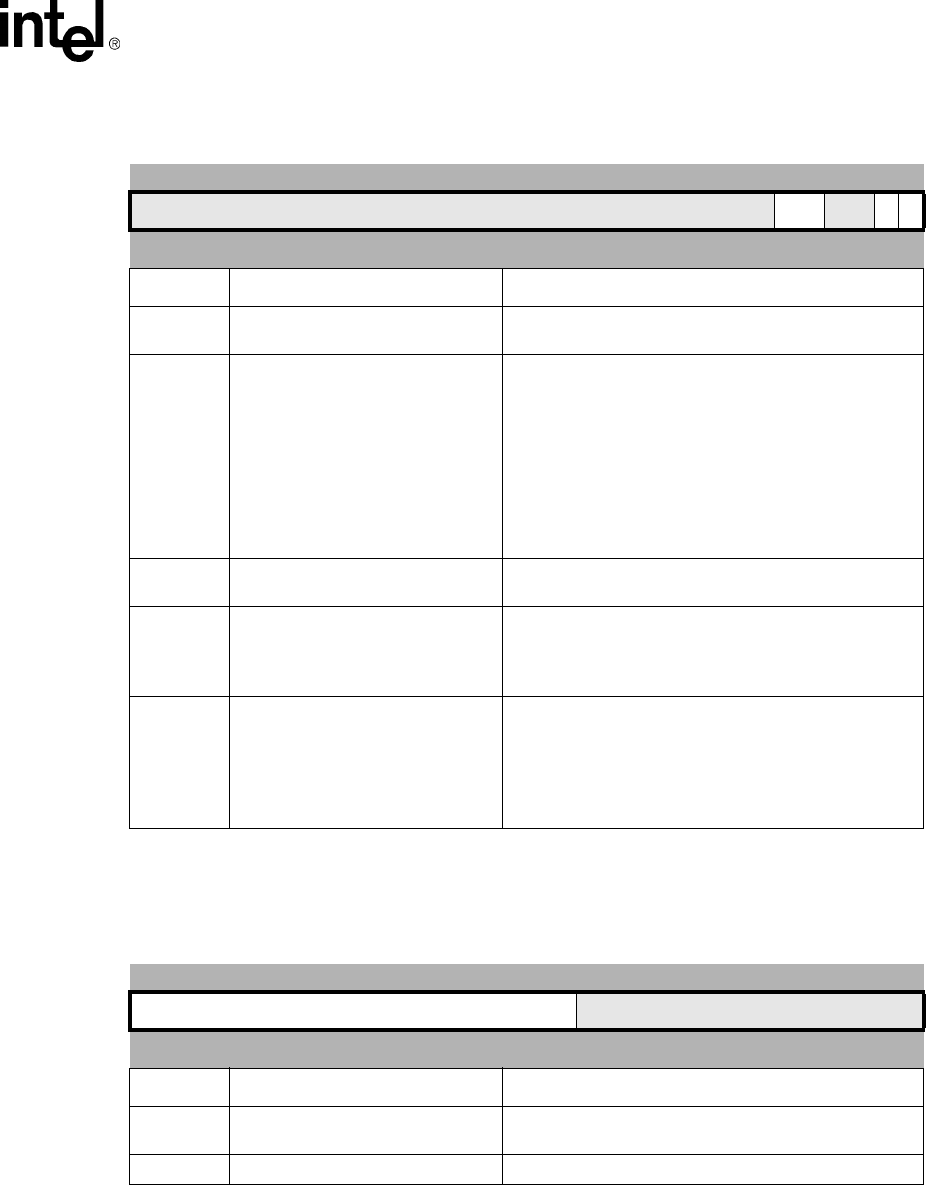

8-1 Clock Count Register (CCNT) ...................................................................................................8-2

8-2 Performance Monitor Count Register (PMN0 and PMN1).........................................................8-2

8-3 Performance Monitor Control Register (CP14, register 0).........................................................8-3

8-4 Performance Monitoring Events ................................................................................................8-4

8-5 Some Common Uses of the PMU..............................................................................................8-5

9-1 TAP Controller Pin Definitions ...................................................................................................9-2

9-2 JTAG Instruction Codes.............................................................................................................9-4

9-3 JTAG Instruction Descriptions ...................................................................................................9-4

10-1Coprocessor 15 Debug Registers............................................................................................10-2

10-2Coprocessor 14 Debug Registers............................................................................................10-2

10-3Debug Control and Status Register (DCSR) ...........................................................................10-3

10-4Event Priority ...........................................................................................................................10-6

10-5Instruction Breakpoint Address and Control Register (IBCRx)................................................10-9

10-6Data Breakpoint Register (DBRx)............................................................................................10-9

10-7Data Breakpoint Controls Register (DBCON)........................................................................10-10

10-8TX RX Control Register (TXRXCTRL)...................................................................................10-12

10-9Normal RX Handshaking .......................................................................................................10-12

10-10High-Speed Download Handshaking States........................................................................10-13

10-11TX Handshaking...................................................................................................................10-14

10-12TXRXCTRL Mnemonic Extensions ......................................................................................10-14

10-13TX Register ..........................................................................................................................10-15

10-14RX Register..........................................................................................................................10-15

10-15DEBUG Data Register Reset Values...................................................................................10-23

10-16CP 14 Trace Buffer Register Summary................................................................................10-24

10-17Checkpoint Register (CHKPTx) ...........................................................................................10-24

10-18TBREG Format ....................................................................................................................10-25

10-19Message Byte Formats ........................................................................................................10-28

10-20LDIC Cache Functions .........................................................................................................10-32

11-1Branch Latency Penalty...........................................................................................................11-1

11-2Latency Example .....................................................................................................................11-3

11-3Branch Instruction Timings (Those predicted by the BTB) ......................................................11-3

xii Intel® XScale™ Microarchitecture User’s Manual

Contents

11-4Branch Instruction Timings (Those not predicted by the BTB) ................................................11-4

11-5Data Processing Instruction Timings .......................................................................................11-4

11-6Multiply Instruction Timings .....................................................................................................11-5

11-7Multiply Implicit Accumulate Instruction Timings .....................................................................11-6

11-8Implicit Accumulator Access Instruction Timings.....................................................................11-6

11-9Saturated Data Processing Instruction Timings ......................................................................11-7

11-10Status Register Access Instruction Timings...........................................................................11-7

11-11Load and Store Instruction Timings .......................................................................................11-7

11-12Load and Store Multiple Instruction Timings..........................................................................11-8

11-13Semaphore Instruction Timings .............................................................................................11-8

11-14CP15 Register Access Instruction Timings............................................................................11-8

11-15CP14 Register Access Instruction Timings............................................................................11-8

11-16SWI Instruction Timings .........................................................................................................11-8

11-17Count Leading Zeros Instruction Timings ..............................................................................11-9

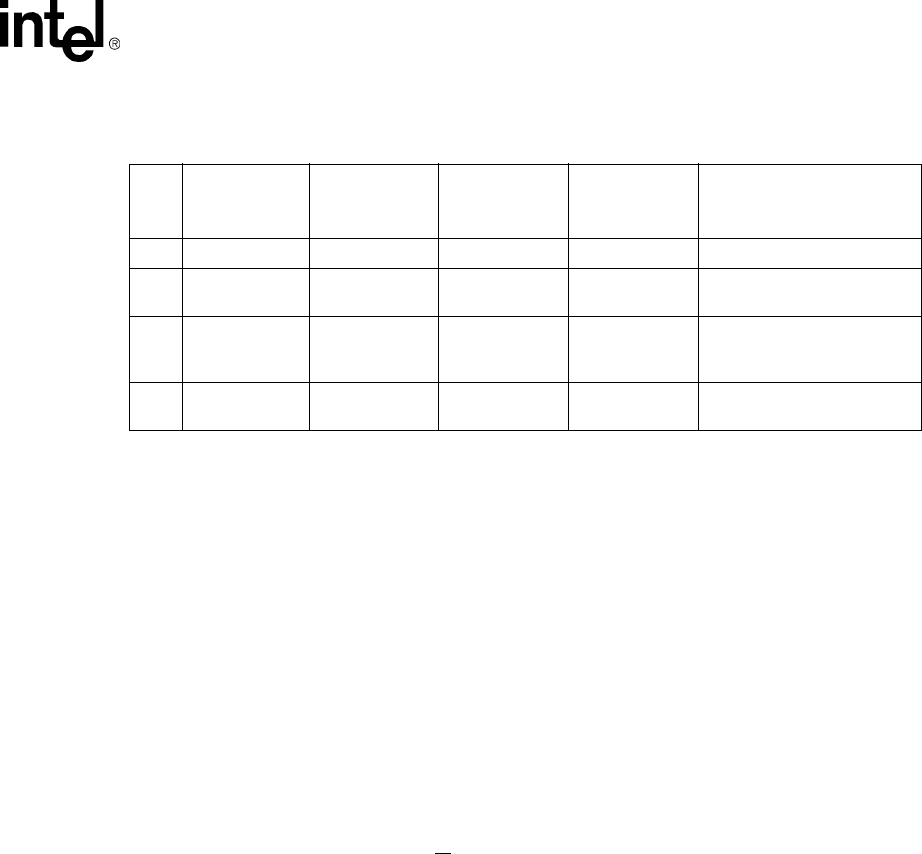

A-1 Pipelines and Pipe stages ............................................................................A-3

Intel® XScale™ Microarchitecture User’s Manual 1-1

Introduction

1

1.1 About This Document

This document describes the Intel® XScale™ core as implemented in the PXA255 processor.

Intel Corporation assumes no responsibility for any errors which may appear in this document nor

does it make a commitment to update the information contained herein.

Intel retains the right to make changes to these specifications at any time, without notice. In

particular, descriptions of features, timings, and pin-outs does not imply a commitment to

implement them.

1.1.1 How to Read This Document

It is necessary to be familiar with the ARM* Version 5TE Architecture in order to understand some

aspects of this document.

Each chapter in this document focuses on a specific architectural feature of the Intel® XScale™

core.

•Chapter 2, “Programming Model”

•Chapter 3, “Memory Management”

•Chapter 4, “Instruction Cache”

•Chapter 5, “Branch Target Buffer”

•Chapter 6, “Data Cache”

•Chapter 7, “Configuration”

•Chapter 8, “Performance Monitoring”

•Chapter 10, “Software Debug”

•Chapter 11, “Performance Considerations”

•Appendix A, “Optimization Guide” covers instruction scheduling techniques.

Note: Most of the “buzz words” and acronyms found throughout this document are captured in

Section 1.3.2, “Terminology and Acronyms” on page 1-6, located at the end of this chapter.

1.1.2 Other Relevant Documents

•ARM* Architecture Reference Manual Document Number: ARM DDI 0100E

This document describes the ARM* Architecture and is publicly available.

See http://www.arm.com/ARMARM for details. Sold as:

ARM* Architecture Reference Manual

Second Edition, edited by David Seal: Addison-Wesley: ISBN 0-201-73719-1

•Intel® PXA255 Processor Developer’s Manual, Intel Order # 278693

1-2 Intel® XScale™ Microarchitecture User’s Manual

Introduction

•Intel® PXA255 Processor Design Guide, Intel Order # 278694

•Intel® 80200 Processor Development Manual, Intel Order #273411

This document describes the first implementation of the Intel® XScale™ Microarchitecture

in a microprocessor targeted at IO applications

Available from http://developer.intel.com

1.2 High-Level Overview of the Intel® XScale™ core as

Implemented in the Application Processors

The Intel® XScale™ core is an ARM* V5TE compliant microprocessor. It is a high performance

and low-power device that leads the industry in MIPS/mW. The core is not intended to be delivered

as a stand alone product but as a building block for an ASSP (Application Specific Standard

Product) with embedded markets such as handheld devices, networking, storage, remote access

servers, etc. The PXA255 processor is an example of an ASSP designed primarily for handheld

devices. This document limits itself to describing the implementation of the Intel® XScale™ core

as it is implemented in the PXA255 processor. In almost every attribute the Intel® XScale™ core

used in the application processor is identical to the Intel® XScale™ core implemented in the

Intel® 80200

The Intel® XScale™ core incorporates an extensive list of microarchitecture features that allow it

to achieve high performance. This rich feature set lets you select the appropriate features that

obtain the best performance for your application. Many of the micro-architectural features added to

the Intel® XScale™ core help hide memory latency which often is a serious impediment to high

performance processors. This includes:

•The ability to continue instruction execution even while the data cache is retrieving data from

external memory

•A write buffer

•Write-back caching

•Various data cache allocation policies which can be configured differently for each application

•Cache locking

All these features improve the efficiency of the memory bus external to the core.

The Intel® XScale™ core efficiently handles audio processing through the support of 16-bit data

types and enhanced 16-bit operations. These audio coding enhancements center around multiply

and accumulate operations which accelerate many of the audio filtering and multimedia CODEC

algorithms.

1.2.1 ARM* Compatibility

ARM* Version 5 (V5) Architecture added new features to ARM* Version 4, including among other

inclusions, floating point instructions. The Intel® XScale™ core implements the integer

instruction set of ARM* V5, but does not provide hardware support for any of the floating point

instructions.

Intel® XScale™ Microarchitecture User’s Manual 1-3

Introduction

The Intel® XScale™ core provides the ARM* V5T Thumb instruction set and the ARM* V5E

DSP extensions. To further enhance multimedia applications, the Intel® XScale™ core includes

additional Multiply-Accumulate functionality as the first instantiation of Intel® Media Processing

Technology. These new operations from Intel are mapped into ARM* coprocessor space.

Backward compatibility with StrongARM* products is maintained for user-mode applications.

Operating systems may require modifications to match the specific hardware features of the Intel®

XScale™ core and to take advantage of the performance enhancements added.

1.2.2 Features

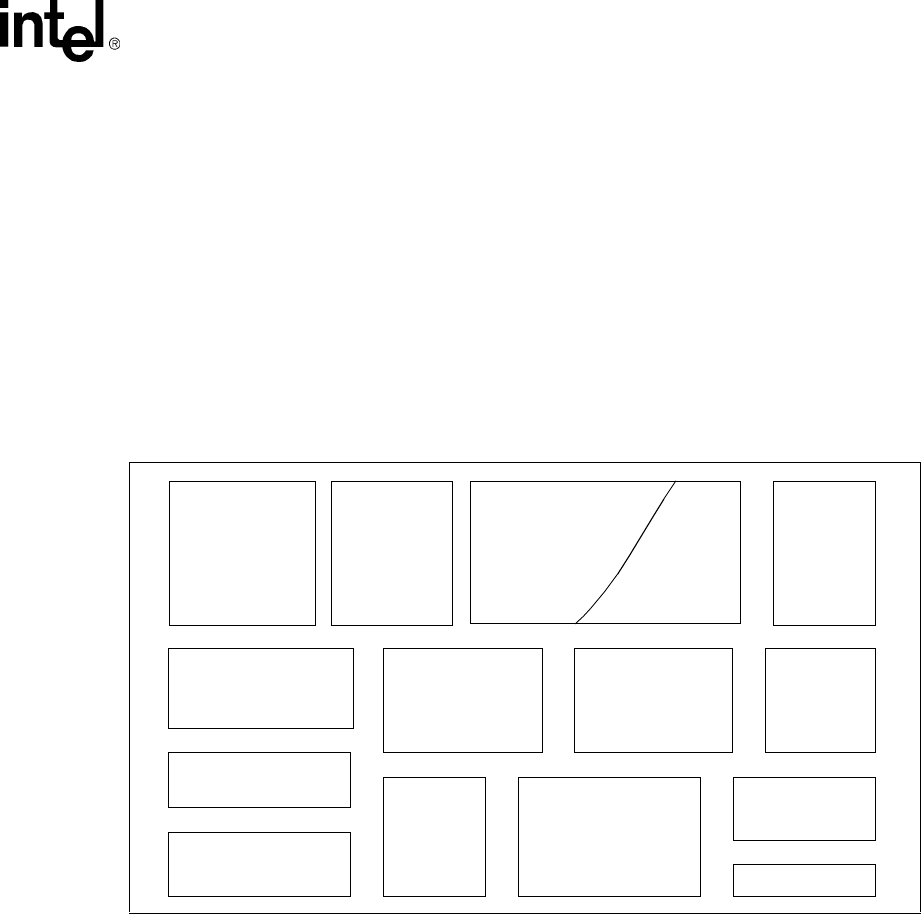

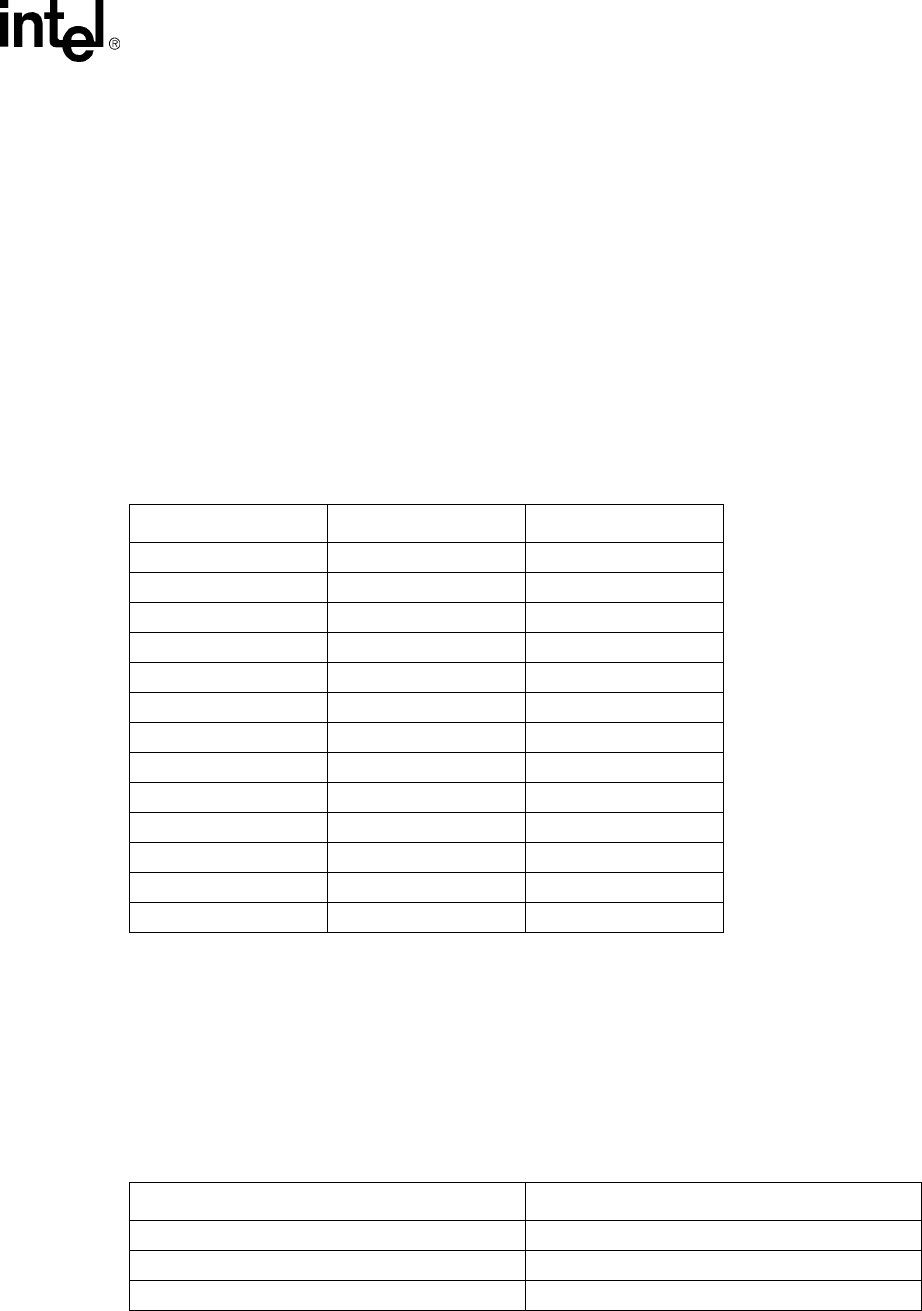

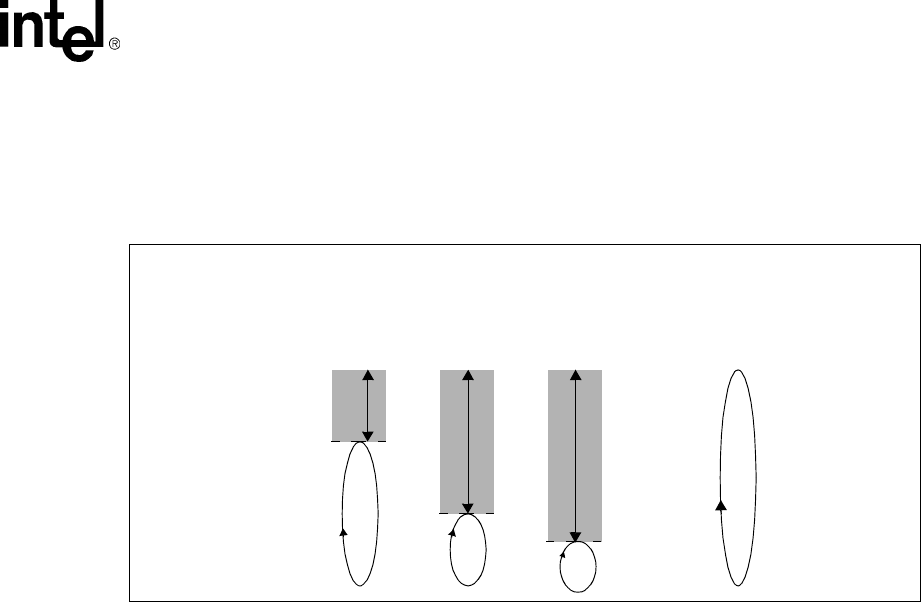

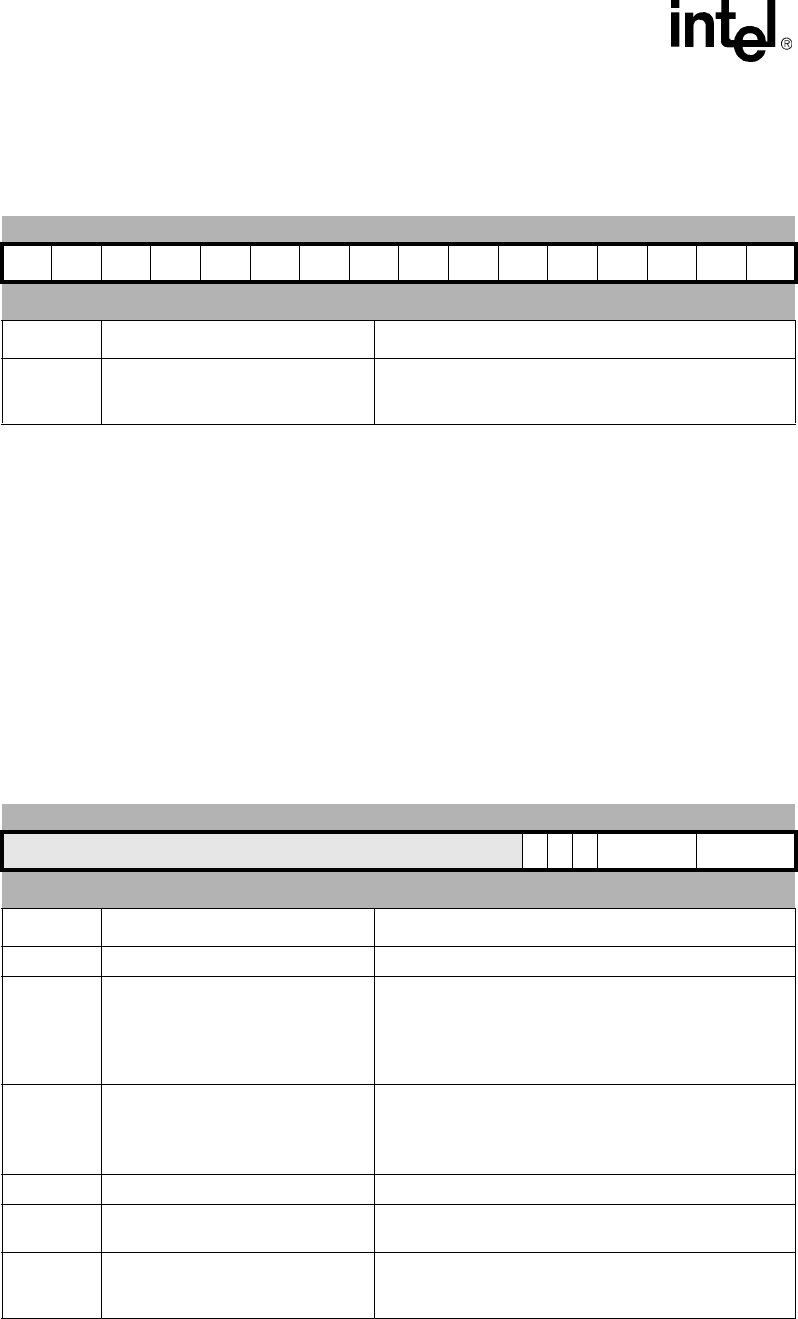

Figure 1-1 shows the major functional blocks of the Intel® XScale™ core. The following sections

give a brief, high-level overview of these blocks.

1.2.2.1 Multiply/Accumulate (MAC)

The MAC unit supports early termination of multiplies/accumulates in two cycles and can sustain a

throughput of a MAC operation every cycle. Several architectural enhancements were made to the

MAC to support audio coding algorithms, which include a 40-bit accumulator and support for 16-

bit packed data.

Refer to Section 2.3, “Extensions to ARM* Architecture” on page 2-2 for more information.

1.2.2.2 Memory Management

The Intel® XScale™ core implements the Memory Management Unit (MMU) Architecture

specified in the ARM* Architecture Reference Manual. The MMU provides access protection and

virtual to physical address translation.

The MMU Architecture also specifies the caching policies for the instruction cache and data cache.

These policies are specified as page attributes and include:

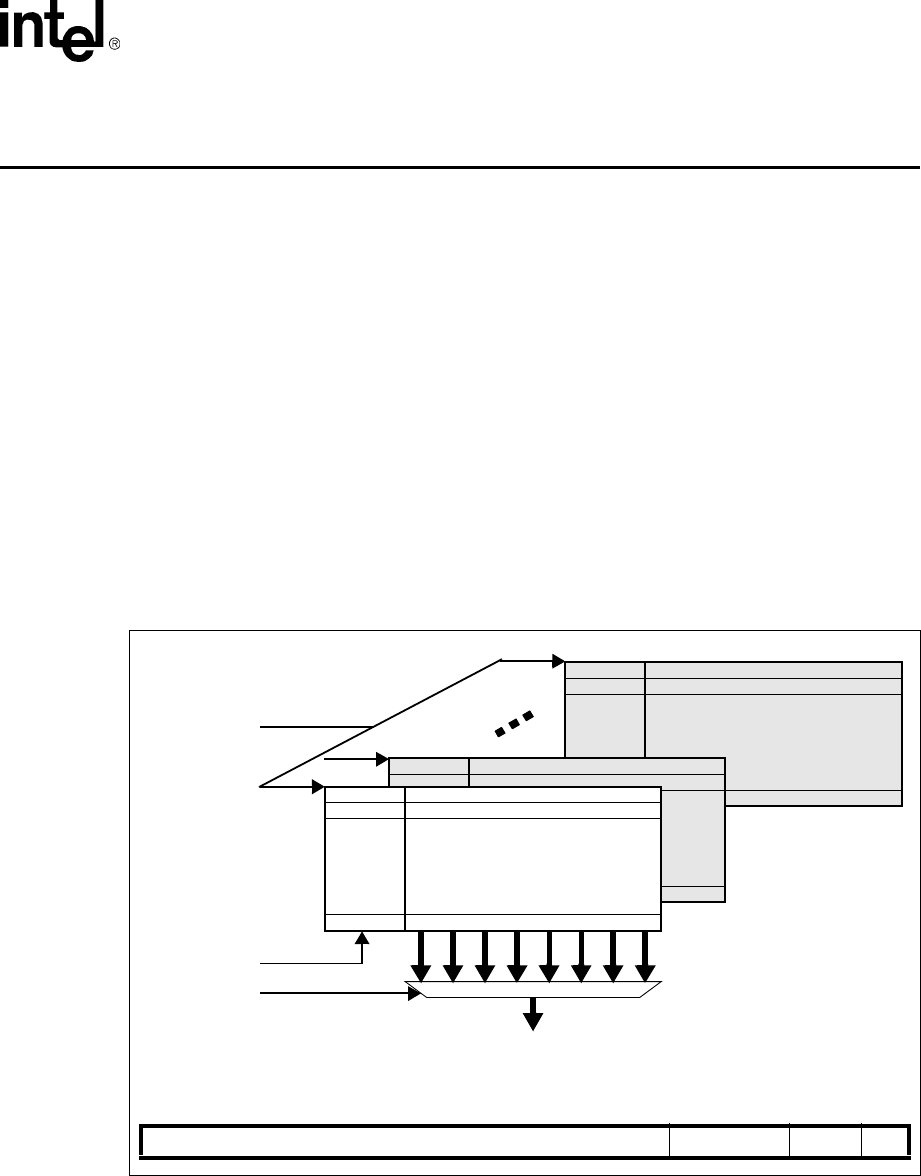

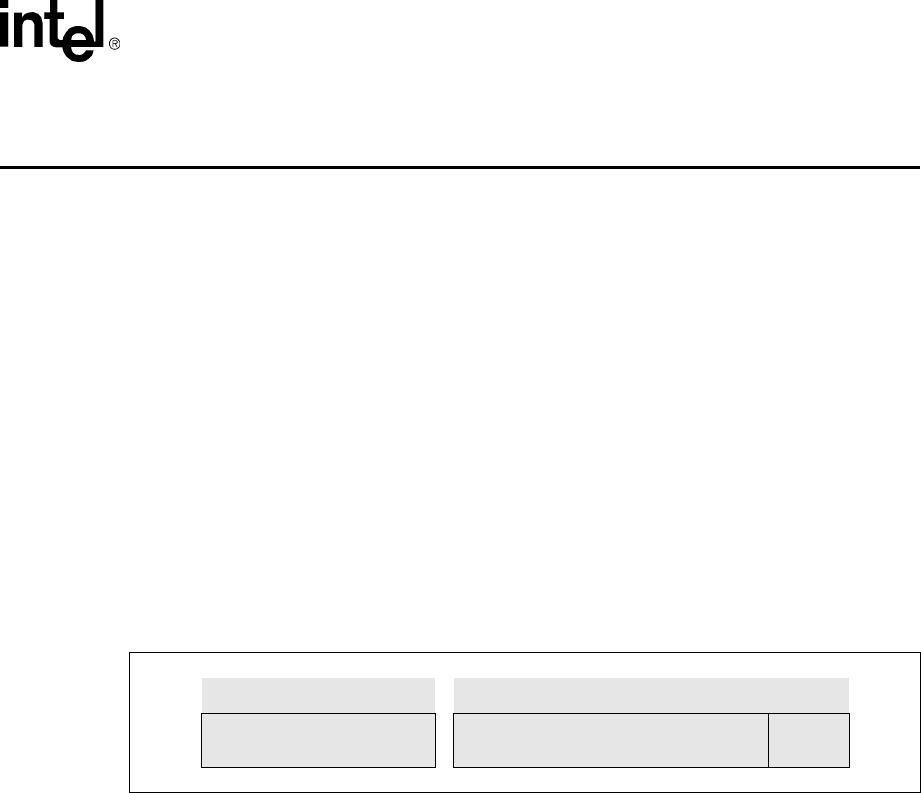

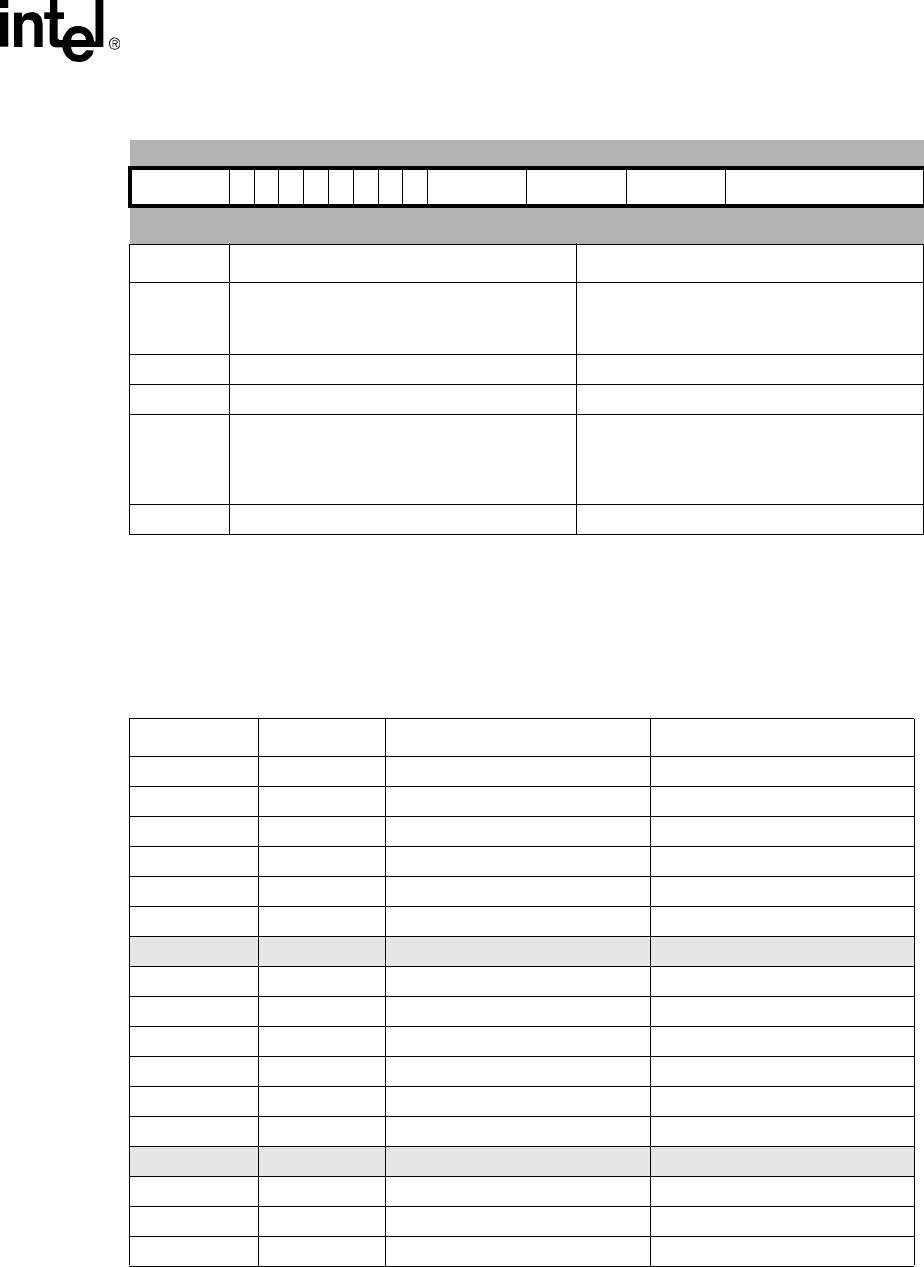

Figure 1-1. Intel® XScale™ Microarchitecture Architecture Features

Micro-

Processor

Instruction Data Cache

Data Ram

Mini-Data

Fill Buffer

Cache Cache

Branch Target

Performance

Debug

IMMU DMMU

Write Buffer

JTAG

Power MAC

Buffer

Mgnt

Ctrl

Monitoring

7 Stage

pipeline

128 Entries

Hardware Breakpoints

Branch History Table

2 Kbytes

2 Ways

Max 32 Kbytes

32 Ways

WR - Back or

WR - Through

Hit under miss

32 Kbytes

32 Ways

Lockable by line

32 entry TLB

Fully associative

Lockable by entry

32 entry TLB

Fully associative

Lockable by entry

4 - 8 entries

Single cycle through-

16-bit SIMD

40-bit accumulator

put (16*32)

Max 28 Kbytes

Re-Map of data

cache

8 entries

Full coalescing

1-4 Intel® XScale™ Microarchitecture User’s Manual

Introduction

•identifying code as cacheable or non-cacheable

•selecting between the mini-data cache or data cache

•write-back or write-through data caching

•enabling data write allocation policy

•enabling the write buffer to coalesce stores to external memory

Refer to Chapter 3, “Memory Management” for more information.

1.2.2.3 Instruction Cache

The Intel® XScale™ core implements a 32-Kbyte, 32-way set associative instruction cache with a

line size of 32 bytes. All requests that “miss” the instruction cache generate a 32-byte read request

to external memory. A mechanism to lock critical code within the cache is also provided.

Refer to Chapter 4, “Instruction Cache” for more information.

In addition to the main instruction cache there is a 2-Kbyte mini-instruction cache dedicated to

advanced debugging features. Refer to Chapter 10, “Software Debug” for more information.

1.2.2.4 Branch Target Buffer

The Intel® XScale™ core provides a Branch Target Buffer (BTB) to predict the outcome of branch

type instructions. It provides storage for the target address of branch type instructions and predicts

the next address to present to the instruction cache when the current instruction address is that of a

branch.

The BTB holds 128 entries. Refer to Chapter 5, “Branch Target Buffer” for more information.

1.2.2.5 Data Cache

The Intel® XScale™ core implements a 32-Kbyte, 32-way set associative data cache and a 2-

Kbyte, 2-way set associative mini-data cache. Each cache has a line size of 32 bytes, supporting

write-through or write-back caching.

The data/mini-data cache is controlled by page attributes defined in the MMU Architecture and by

coprocessor 15.

Refer to Chapter 6, “Data Cache” for more information.

The Intel® XScale™ core allows applications to re-configure a portion of the data cache as data

RAM. Software may place special tables or frequently used variables in this RAM. Refer to

Section 6.4, “Re-configuring the Data Cache as Data RAM” on page 6-10 for more information.

1.2.2.6 Fill Buffer & Write Buffer

The Fill Buffer and Write Buffer enable the loading and storing of data to memory beyond the

Intel® XScale™ core. The Write Buffer carries all write traffic beyond the core allowing data

coalescing when both globally enabled, and when associated with the appropriate memory page

types. The Fill buffer assists the loading of data from memory, which along with an associated

Pend Buffer allows multiple memory reads to be outstanding. Another key function of the Fill

Intel® XScale™ Microarchitecture User’s Manual 1-5

Introduction

Buffer [along with the Instruction Fetch Buffers] is to allow the application processor external

SDRAM to be read as 4-word bursts, rather than single word accesses, improving overall memory

bandwidth.

Both the Fill, Pend and Write buffers help to decouple core speed from any limitations to accessing

external memory. Further details on these buffers can be found in Section 6.5, “Write Buffer/Fill

Buffer Operation and Control” on page 6-13

1.2.2.7 Performance Monitoring

Two performance monitoring counters have been added to the Intel® XScale™ core that can be

configured to monitor various events in the Intel® XScale™ core. These events allow a software

developer to measure cache efficiency, detect system bottlenecks and reduce the overall latency of

programs.

Refer to Chapter 8, “Performance Monitoring” for more information.

1.2.2.8 Power Management

The Intel® XScale™ core incorporates a power and clock management unit that can assist ASSPs

in controlling their clocking and managing their power. These features are described in Section 7.3,

“CP14 Registers” on page 7-15.

1.2.2.9 Debug

Intel® XScale™ core supports software debugging through two instruction address breakpoint

registers, one data-address breakpoint register, one data-address/mask breakpoint register, a mini-

instruction cache and a trace buffer.

Testability & hardware debugging is supported on the Intel® XScale™ core through the Test

Access Port (TAP) Controller implementation, which is based on IEEE 1149.1 (JTAG) Standard

Test Access Port and Boundary-Scan Architecture. The purpose of the TAP controller is to support

test logic internal and external to the Intel® XScale™ core such as built-in self-test and boundary-

scan.

The JTAG port can also be used as a hardware interface for debugger control of software. Refer to

Chapter 10, “Software Debug” for more information.

1.3 Terminology and Conventions

1.3.1 Number Representation

All numbers in this document can be assumed to be base 10 unless designated otherwise. In text

and pseudo code descriptions, hexadecimal numbers have a prefix of 0x and binary numbers have a

prefix of 0b. For example, 107 would be represented as 0x6B in hexadecimal and 0b1101011 in

binary.

Bitfields are expressed with a colon within square brackets, for example a * b [63:0] denotes a 64

bit arithmetic partial result.

1-6 Intel® XScale™ Microarchitecture User’s Manual

Introduction

1.3.2 Terminology and Acronyms

ASSP Application Specific Standard Product. Defined for a specific purpose

but not exclusively available to a single customer.

API Application Programming Interface, typically a defined set of function

calls and passed parameters defining how layers of software interact.

Assert This term refers to the logically active value of a signal or bit.

BTB Branch Target Buffer, a predictor of instructions that follow branches.

Clean A ‘clean’ operation with regard to a data cache is the writing back of

modified data to the external memory system, resulting in no ‘dirty’ lines

remaining in the cache.

Coalescing Coalescing means bringing together a new store operation with an

existing store operation already issued. This includes, in Peripheral

Component Interconnect [PCI] terminology, write merging, write

collapsing, and write combining.

Deassert This term refers to the logically inactive value of a signal or bit.

Flush A ‘flush’ operation invalidates the location(s) in the cache by deasserting

the valid bit. This now invalid cacheline will no longer be searched on

cache accesses. A ‘flush’ operation on a write-back data cache does not

implicitly imply a ‘clean’ operation.

NOP Shortening of No OPeration, meaning an instruction with no state

changing effect. A typical example might be Add-constant-zero without

condition flag update

Privilege Mode Any chip mode of operation that is not User Mode; the mode typically

used for applications software. A Privileged Mode gains access to shared

system resources.

Reserved A reserved field is a register field that may be used by an implementation

but not intended to be programmed. If the initial value of a reserved field

is supplied by software, this value must be zero. Software should not

modify reserved fields or depend on any values in reserved fields.