Valgrind Manual

valgrind_manual

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 396 [warning: Documents this large are best viewed by clicking the View PDF Link!]

Valgrind Documentation

Release 3.14.0 9 October 2018

Copyright ©2000-2018 AUTHORS

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation

License, Version 1.2 or any later version published by the Free Software Foundation; with no Invariant Sections, with

no Front-Cover Texts, and with no Back-Cover Texts. A copy of the license is included in the section entitled The

GNU Free Documentation License.

This is the top level of Valgrind’s documentation tree. The documentation is contained in six logically separate

documents, as listed in the following Table of Contents. To get started quickly, read the Valgrind Quick Start Guide.

For full documentation on Valgrind, read the Valgrind User Manual.

Valgrind Documentation

Table of Contents

The Valgrind Quick Start Guide .....................................................................iii

Valgrind User Manual ..............................................................................iv

Valgrind FAQ ..................................................................................cxciv

Valgrind Technical Documentation ...................................................................x

Valgrind Distribution Documents ...................................................................xx

GNU Licenses ...................................................................................cxli

2

The Valgrind Quick Start Guide

Table of Contents

The Valgrind Quick Start Guide ..................................................................... 1

1. Introduction .....................................................................................1

2. Preparing your program .......................................................................... 1

3. Running your program under Memcheck ...........................................................1

4. Interpreting Memcheck’s output ...................................................................1

5. Caveats .........................................................................................3

6. More information ................................................................................3

iv

The Valgrind Quick Start Guide

The Valgrind Quick Start Guide

1. Introduction

The Valgrind tool suite provides a number of debugging and profiling tools that help you make your programs faster

and more correct. The most popular of these tools is called Memcheck. It can detect many memory-related errors

that are common in C and C++ programs and that can lead to crashes and unpredictable behaviour.

The rest of this guide gives the minimum information you need to start detecting memory errors in your program with

Memcheck. For full documentation of Memcheck and the other tools, please read the User Manual.

2. Preparing your program

Compile your program with -g to include debugging information so that Memcheck’s error messages include exact

line numbers. Using -O0 is also a good idea, if you can tolerate the slowdown. With -O1 line numbers in

error messages can be inaccurate, although generally speaking running Memcheck on code compiled at -O1 works

fairly well, and the speed improvement compared to running -O0 is quite significant. Use of -O2 and above is not

recommended as Memcheck occasionally reports uninitialised-value errors which don’t really exist.

3. Running your program under Memcheck

If you normally run your program like this:

myprog arg1 arg2

Use this command line:

valgrind --leak-check=yes myprog arg1 arg2

Memcheck is the default tool. The --leak-check option turns on the detailed memory leak detector.

Your program will run much slower (eg. 20 to 30 times) than normal, and use a lot more memory. Memcheck will

issue messages about memory errors and leaks that it detects.

4. Interpreting Memcheck’s output

Here’s an example C program, in a file called a.c, with a memory error and a memory leak.

1

The Valgrind Quick Start Guide

#include <stdlib.h>

void f(void)

{

int*x = malloc(10 *sizeof(int));

x[10] = 0; // problem 1: heap block overrun

} // problem 2: memory leak -- x not freed

int main(void)

{

f();

return 0;

}

Most error messages look like the following, which describes problem 1, the heap block overrun:

==19182== Invalid write of size 4

==19182== at 0x804838F: f (example.c:6)

==19182== by 0x80483AB: main (example.c:11)

==19182== Address 0x1BA45050 is 0 bytes after a block of size 40 alloc’d

==19182== at 0x1B8FF5CD: malloc (vg_replace_malloc.c:130)

==19182== by 0x8048385: f (example.c:5)

==19182== by 0x80483AB: main (example.c:11)

Things to notice:

• There is a lot of information in each error message; read it carefully.

• The 19182 is the process ID; it’s usually unimportant.

• The first line ("Invalid write...") tells you what kind of error it is. Here, the program wrote to some memory it

should not have due to a heap block overrun.

• Below the first line is a stack trace telling you where the problem occurred. Stack traces can get quite large, and be

confusing, especially if you are using the C++ STL. Reading them from the bottom up can help. If the stack trace

is not big enough, use the --num-callers option to make it bigger.

• The code addresses (eg. 0x804838F) are usually unimportant, but occasionally crucial for tracking down weirder

bugs.

• Some error messages have a second component which describes the memory address involved. This one shows

that the written memory is just past the end of a block allocated with malloc() on line 5 of example.c.

2

The Valgrind Quick Start Guide

It’s worth fixing errors in the order they are reported, as later errors can be caused by earlier errors. Failing to do this

is a common cause of difficulty with Memcheck.

Memory leak messages look like this:

==19182== 40 bytes in 1 blocks are definitely lost in loss record 1 of 1

==19182== at 0x1B8FF5CD: malloc (vg_replace_malloc.c:130)

==19182== by 0x8048385: f (a.c:5)

==19182== by 0x80483AB: main (a.c:11)

The stack trace tells you where the leaked memory was allocated. Memcheck cannot tell you why the memory leaked,

unfortunately. (Ignore the "vg_replace_malloc.c", that’s an implementation detail.)

There are several kinds of leaks; the two most important categories are:

• "definitely lost": your program is leaking memory -- fix it!

• "probably lost": your program is leaking memory, unless you’re doing funny things with pointers (such as moving

them to point to the middle of a heap block).

Memcheck also reports uses of uninitialised values, most commonly with the message "Conditional jump or move

depends on uninitialised value(s)". It can be difficult to determine the root cause of these errors. Try using the

--track-origins=yes to get extra information. This makes Memcheck run slower, but the extra information

you get often saves a lot of time figuring out where the uninitialised values are coming from.

If you don’t understand an error message, please consult Explanation of error messages from Memcheck in the

Valgrind User Manual which has examples of all the error messages Memcheck produces.

5. Caveats

Memcheck is not perfect; it occasionally produces false positives, and there are mechanisms for suppressing these

(see Suppressing errors in the Valgrind User Manual). However, it is typically right 99% of the time, so you should be

wary of ignoring its error messages. After all, you wouldn’t ignore warning messages produced by a compiler, right?

The suppression mechanism is also useful if Memcheck is reporting errors in library code that you cannot change.

The default suppression set hides a lot of these, but you may come across more.

Memcheck cannot detect every memory error your program has. For example, it can’t detect out-of-range reads or

writes to arrays that are allocated statically or on the stack. But it should detect many errors that could crash your

program (eg. cause a segmentation fault).

Try to make your program so clean that Memcheck reports no errors. Once you achieve this state, it is much easier to

see when changes to the program cause Memcheck to report new errors. Experience from several years of Memcheck

use shows that it is possible to make even huge programs run Memcheck-clean. For example, large parts of KDE,

OpenOffice.org and Firefox are Memcheck-clean, or very close to it.

6. More information

Please consult the Valgrind FAQ and the Valgrind User Manual, which have much more information. Note that the

other tools in the Valgrind distribution can be invoked with the --tool option.

3

Valgrind User Manual

Table of Contents

1. Introduction .....................................................................................1

1.1. An Overview of Valgrind ....................................................................... 1

1.2. How to navigate this manual .................................................................... 1

2. Using and understanding the Valgrind core ......................................................... 3

2.1. What Valgrind does with your program ...........................................................3

2.2. Getting started .................................................................................4

2.3. The Commentary .............................................................................. 4

2.4. Reporting of errors .............................................................................6

2.5. Suppressing errors ............................................................................. 7

2.6. Core Command-line Options ....................................................................9

2.6.1. Tool-selection Option ........................................................................10

2.6.2. Basic Options ...............................................................................10

2.6.3. Error-related Options ........................................................................13

2.6.4. malloc-related Options .......................................................................21

2.6.5. Uncommon Options .........................................................................22

2.6.6. Debugging Options ..........................................................................31

2.6.7. Setting Default Options ......................................................................31

2.7. Support for Threads ...........................................................................32

2.7.1. Scheduling and Multi-Thread Performance .....................................................32

2.8. Handling of Signals ...........................................................................33

2.9. Execution Trees .............................................................................. 33

2.10. Building and Installing Valgrind ...............................................................38

2.11. If You Have Problems ........................................................................39

2.12. Limitations ................................................................................. 39

2.13. An Example Run ............................................................................ 42

2.14. Warning Messages You Might See .............................................................43

3. Using and understanding the Valgrind core: Advanced Topics ....................................... 44

3.1. The Client Request mechanism .................................................................44

3.2. Debugging your program using Valgrind gdbserver and GDB ......................................47

3.2.1. Quick Start: debugging in 3 steps ............................................................. 47

3.2.2. Valgrind gdbserver overall organisation ........................................................47

3.2.3. Connecting GDB to a Valgrind gdbserver ......................................................48

3.2.4. Connecting to an Android gdbserver .......................................................... 50

3.2.5. Monitor command handling by the Valgrind gdbserver .......................................... 50

3.2.6. Valgrind gdbserver thread information .........................................................52

3.2.7. Examining and modifying Valgrind shadow registers ............................................52

3.2.8. Limitations of the Valgrind gdbserver ......................................................... 53

3.2.9. vgdb command line options .................................................................. 57

3.2.10. Valgrind monitor commands ................................................................ 59

3.3. Function wrapping ............................................................................62

3.3.1. A Simple Example .......................................................................... 62

3.3.2. Wrapping Specifications ..................................................................... 63

3.3.3. Wrapping Semantics ........................................................................ 64

3.3.4. Debugging ................................................................................. 65

3.3.5. Limitations - control flow .................................................................... 65

3.3.6. Limitations - original function signatures ...................................................... 66

3.3.7. Examples .................................................................................. 66

4. Memcheck: a memory error detector ............................................................. 67

4.1. Overview .................................................................................... 67

4.2. Explanation of error messages from Memcheck .................................................. 67

4.2.1. Illegal read / Illegal write errors ...............................................................67

4.2.2. Use of uninitialised values ................................................................... 68

v

Valgrind User Manual

4.2.3. Use of uninitialised or unaddressable values in system calls ......................................69

4.2.4. Illegal frees .................................................................................69

4.2.5. When a heap block is freed with an inappropriate deallocation function ........................... 70

4.2.6. Overlapping source and destination blocks .....................................................71

4.2.7. Fishy argument values .......................................................................71

4.2.8. Memory leak detection ...................................................................... 72

4.3. Memcheck Command-Line Options ............................................................ 76

4.4. Writing suppression files ...................................................................... 81

4.5. Details of Memcheck’s checking machinery ..................................................... 82

4.5.1. Valid-value (V) bits ......................................................................... 82

4.5.2. Valid-address (A) bits ....................................................................... 84

4.5.3. Putting it all together ........................................................................ 84

4.6. Memcheck Monitor Commands ................................................................ 85

4.7. Client Requests ...............................................................................91

4.8. Memory Pools: describing and working with custom allocators .................................... 92

4.9. Debugging MPI Parallel Programs with Valgrind .................................................95

4.9.1. Building and installing the wrappers .......................................................... 95

4.9.2. Getting started ..............................................................................96

4.9.3. Controlling the wrapper library ............................................................... 96

4.9.4. Functions .................................................................................. 97

4.9.5. Types ......................................................................................98

4.9.6. Writing new wrappers ....................................................................... 98

4.9.7. What to expect when using the wrappers .......................................................98

5. Cachegrind: a cache and branch-prediction profiler ................................................100

5.1. Overview ...................................................................................100

5.2. Using Cachegrind, cg_annotate and cg_merge .................................................. 100

5.2.1. Running Cachegrind ....................................................................... 101

5.2.2. Output File ................................................................................101

5.2.3. Running cg_annotate .......................................................................102

5.2.4. The Output Preamble .......................................................................102

5.2.5. The Global and Function-level Counts ........................................................103

5.2.6. Line-by-line Counts ........................................................................104

5.2.7. Annotating Assembly Code Programs ........................................................106

5.2.8. Forking Programs ..........................................................................174

5.2.9. cg_annotate Warnings ...................................................................... 106

5.2.10. Unusual Annotation Cases .................................................................107

5.2.11. Merging Profiles with cg_merge ............................................................108

5.2.12. Differencing Profiles with cg_diff ...........................................................108

5.3. Cachegrind Command-line Options ............................................................109

5.4. cg_annotate Command-line Options ........................................................... 110

5.5. cg_merge Command-line Options ............................................................. 111

5.6. cg_diff Command-line Options ................................................................111

5.7. Acting on Cachegrind’s Information ........................................................... 112

5.8. Simulation Details ...........................................................................113

5.8.1. Cache Simulation Specifics ................................................................. 113

5.8.2. Branch Simulation Specifics ................................................................ 114

5.8.3. Accuracy ..................................................................................114

5.9. Implementation Details .......................................................................115

5.9.1. How Cachegrind Works .................................................................... 115

5.9.2. Cachegrind Output File Format ..............................................................115

6. Callgrind: a call-graph generating cache and branch prediction profiler ..............................117

6.1. Overview ...................................................................................117

6.1.1. Functionality .............................................................................. 117

6.1.2. Basic Usage ...............................................................................118

vi

Valgrind User Manual

6.2. Advanced Usage .............................................................................119

6.2.1. Multiple profiling dumps from one program run ...............................................119

6.2.2. Limiting the range of collected events ........................................................120

6.2.3. Counting global bus events ..................................................................121

6.2.4. Avoiding cycles ............................................................................121

6.2.5. Forking Programs ..........................................................................122

6.3. Callgrind Command-line Options ..............................................................122

6.3.1. Dump creation options ..................................................................... 123

6.3.2. Activity options ............................................................................123

6.3.3. Data collection options ..................................................................... 124

6.3.4. Cost entity separation options ............................................................... 125

6.3.5. Simulation options ......................................................................... 126

6.3.6. Cache simulation options ................................................................... 126

6.4. Callgrind Monitor Commands .................................................................127

6.5. Callgrind specific client requests .............................................................. 127

6.6. callgrind_annotate Command-line Options ..................................................... 128

6.7. callgrind_control Command-line Options .......................................................129

7. Helgrind: a thread error detector ................................................................ 131

7.1. Overview ...................................................................................131

7.2. Detected errors: Misuses of the POSIX pthreads API ............................................ 131

7.3. Detected errors: Inconsistent Lock Orderings ................................................... 132

7.4. Detected errors: Data Races .................................................................. 134

7.4.1. A Simple Data Race ........................................................................134

7.4.2. Helgrind’s Race Detection Algorithm ........................................................ 136

7.4.3. Interpreting Race Error Messages ............................................................139

7.5. Hints and Tips for Effective Use of Helgrind ....................................................140

7.6. Helgrind Command-line Options .............................................................. 144

7.7. Helgrind Monitor Commands ................................................................. 146

7.8. Helgrind Client Requests ..................................................................... 148

7.9. A To-Do List for Helgrind ....................................................................148

8. DRD: a thread error detector ....................................................................149

8.1. Overview ...................................................................................149

8.1.1. Multithreaded Programming Paradigms ...................................................... 149

8.1.2. POSIX Threads Programming Model ........................................................ 149

8.1.3. Multithreaded Programming Problems ....................................................... 150

8.1.4. Data Race Detection ....................................................................... 150

8.2. Using DRD ................................................................................. 151

8.2.1. DRD Command-line Options ................................................................151

8.2.2. Detected Errors: Data Races ................................................................ 154

8.2.3. Detected Errors: Lock Contention ........................................................... 155

8.2.4. Detected Errors: Misuse of the POSIX threads API ............................................ 156

8.2.5. Client Requests ............................................................................157

8.2.6. Debugging C++11 Programs ................................................................ 159

8.2.7. Debugging GNOME Programs .............................................................. 160

8.2.8. Debugging Boost.Thread Programs .......................................................... 160

8.2.9. Debugging OpenMP Programs .............................................................. 160

8.2.10. DRD and Custom Memory Allocators .......................................................161

8.2.11. DRD Versus Memcheck ................................................................... 162

8.2.12. Resource Requirements ....................................................................162

8.2.13. Hints and Tips for Effective Use of DRD .................................................... 162

8.3. Using the POSIX Threads API Effectively ......................................................163

8.3.1. Mutex types ...............................................................................163

8.3.2. Condition variables ........................................................................ 163

8.3.3. pthread_cond_timedwait and timeouts ........................................................163

vii

Valgrind User Manual

8.4. Limitations ................................................................................. 164

8.5. Feedback ................................................................................... 164

9. Massif: a heap profiler ......................................................................... 165

9.1. Overview ...................................................................................193

9.2. Using Massif and ms_print ................................................................... 165

9.2.1. An Example Program ...................................................................... 165

9.2.2. Running Massif ............................................................................166

9.2.3. Running ms_print ..........................................................................166

9.2.4. The Output Preamble .......................................................................167

9.2.5. The Output Graph ..........................................................................167

9.2.6. The Snapshot Details .......................................................................170

9.2.7. Forking Programs ..........................................................................174

9.2.8. Measuring All Memory in a Process ......................................................... 174

9.2.9. Acting on Massif’s Information ..............................................................174

9.3. Massif Command-line Options ................................................................175

9.4. Massif Monitor Commands ...................................................................177

9.5. Massif Client Requests .......................................................................177

9.6. ms_print Command-line Options .............................................................. 177

9.7. Massif’s Output File Format .................................................................. 178

10. DHAT: a dynamic heap analysis tool ........................................................... 179

10.1. Overview ..................................................................................179

10.2. Understanding DHAT’s output ...............................................................180

10.2.1. Interpreting the max-live, tot-alloc and deaths fields .......................................... 180

10.2.2. Interpreting the acc-ratios fields ............................................................ 181

10.2.3. Interpreting "Aggregated access counts by offset" data ........................................182

10.3. DHAT Command-line Options ...............................................................183

11. SGCheck: an experimental stack and global array overrun detector ................................ 185

11.1. Overview ..................................................................................185

11.2. SGCheck Command-line Options ............................................................ 185

11.3. How SGCheck Works .......................................................................185

11.4. Comparison with Memcheck .................................................................186

11.5. Limitations ................................................................................ 186

11.6. Still To Do: User-visible Functionality ........................................................187

11.7. Still To Do: Implementation Tidying ..........................................................187

12. BBV: an experimental basic block vector generation tool ......................................... 188

12.1. Overview ..................................................................................188

12.2. Using Basic Block Vectors to create SimPoints ................................................ 188

12.3. BBV Command-line Options ................................................................ 189

12.4. Basic Block Vector File Format .............................................................. 189

12.5. Implementation ............................................................................ 190

12.6. Threaded Executable Support ................................................................190

12.7. Validation ................................................................................. 190

12.8. Performance ............................................................................... 191

13. Lackey: an example tool ...................................................................... 192

13.1. Overview ..................................................................................192

13.2. Lackey Command-line Options .............................................................. 192

14. Nulgrind: the minimal Valgrind tool ............................................................193

14.1. Overview ..................................................................................193

viii

1. Introduction

1.1. An Overview of Valgrind

Valgrind is an instrumentation framework for building dynamic analysis tools. It comes with a set of tools each of

which performs some kind of debugging, profiling, or similar task that helps you improve your programs. Valgrind’s

architecture is modular, so new tools can be created easily and without disturbing the existing structure.

A number of useful tools are supplied as standard.

1. Memcheck is a memory error detector. It helps you make your programs, particularly those written in C and C++,

more correct.

2. Cachegrind is a cache and branch-prediction profiler. It helps you make your programs run faster.

3. Callgrind is a call-graph generating cache profiler. It has some overlap with Cachegrind, but also gathers some

information that Cachegrind does not.

4. Helgrind is a thread error detector. It helps you make your multi-threaded programs more correct.

5. DRD is also a thread error detector. It is similar to Helgrind but uses different analysis techniques and so may find

different problems.

6. Massif is a heap profiler. It helps you make your programs use less memory.

7. DHAT is a different kind of heap profiler. It helps you understand issues of block lifetimes, block utilisation, and

layout inefficiencies.

8. SGcheck is an experimental tool that can detect overruns of stack and global arrays. Its functionality is

complementary to that of Memcheck: SGcheck finds problems that Memcheck can’t, and vice versa..

9. BBV is an experimental SimPoint basic block vector generator. It is useful to people doing computer architecture

research and development.

There are also a couple of minor tools that aren’t useful to most users: Lackey is an example tool that illustrates

some instrumentation basics; and Nulgrind is the minimal Valgrind tool that does no analysis or instrumentation, and

is only useful for testing purposes.

Valgrind is closely tied to details of the CPU and operating system, and to a lesser extent, the compiler and basic C

libraries. Nonetheless, it supports a number of widely-used platforms, listed in full at http://www.valgrind.org/.

Valgrind is built via the standard Unix ./configure,make,make install process; full details are given in

the README file in the distribution.

Valgrind is licensed under the The GNU General Public License, version 2. The valgrind/*.h headers that

you may wish to include in your code (eg. valgrind.h,memcheck.h,helgrind.h, etc.) are distributed under

a BSD-style license, so you may include them in your code without worrying about license conflicts. Some of

the PThreads test cases, pth_*.c, are taken from "Pthreads Programming" by Bradford Nichols, Dick Buttlar &

Jacqueline Proulx Farrell, ISBN 1-56592-115-1, published by O’Reilly & Associates, Inc.

If you contribute code to Valgrind, please ensure your contributions are licensed as "GPLv2, or (at your option) any

later version." This is so as to allow the possibility of easily upgrading the license to GPLv3 in future. If you want to

modify code in the VEX subdirectory, please also see the file VEX/HACKING.README in the distribution.

1

Introduction

1.2. How to navigate this manual

This manual’s structure reflects the structure of Valgrind itself. First, we describe the Valgrind core, how to use it, and

the options it supports. Then, each tool has its own chapter in this manual. You only need to read the documentation

for the core and for the tool(s) you actually use, although you may find it helpful to be at least a little bit familiar with

what all tools do. If you’re new to all this, you probably want to run the Memcheck tool and you might find the The

Valgrind Quick Start Guide useful.

Be aware that the core understands some command line options, and the tools have their own options which they know

about. This means there is no central place describing all the options that are accepted -- you have to read the options

documentation both for Valgrind’s core and for the tool you want to use.

2

2. Using and understanding the

Valgrind core

This chapter describes the Valgrind core services, command-line options and behaviours. That means it is relevant

regardless of what particular tool you are using. The information should be sufficient for you to make effective

day-to-day use of Valgrind. Advanced topics related to the Valgrind core are described in Valgrind’s core: advanced

topics.

A point of terminology: most references to "Valgrind" in this chapter refer to the Valgrind core services.

2.1. What Valgrind does with your program

Valgrind is designed to be as non-intrusive as possible. It works directly with existing executables. You don’t need to

recompile, relink, or otherwise modify the program to be checked.

You invoke Valgrind like this:

valgrind [valgrind-options] your-prog [your-prog-options]

The most important option is --tool which dictates which Valgrind tool to run. For example, if want to run the

command ls -l using the memory-checking tool Memcheck, issue this command:

valgrind --tool=memcheck ls -l

However, Memcheck is the default, so if you want to use it you can omit the --tool option.

Regardless of which tool is in use, Valgrind takes control of your program before it starts. Debugging information is

read from the executable and associated libraries, so that error messages and other outputs can be phrased in terms of

source code locations, when appropriate.

Your program is then run on a synthetic CPU provided by the Valgrind core. As new code is executed for the first

time, the core hands the code to the selected tool. The tool adds its own instrumentation code to this and hands the

result back to the core, which coordinates the continued execution of this instrumented code.

The amount of instrumentation code added varies widely between tools. At one end of the scale, Memcheck adds

code to check every memory access and every value computed, making it run 10-50 times slower than natively. At the

other end of the spectrum, the minimal tool, called Nulgrind, adds no instrumentation at all and causes in total "only"

about a 4 times slowdown.

Valgrind simulates every single instruction your program executes. Because of this, the active tool checks, or profiles,

not only the code in your application but also in all supporting dynamically-linked libraries, including the C library,

graphical libraries, and so on.

If you’re using an error-detection tool, Valgrind may detect errors in system libraries, for example the GNU C or X11

libraries, which you have to use. You might not be interested in these errors, since you probably have no control

over that code. Therefore, Valgrind allows you to selectively suppress errors, by recording them in a suppressions

file which is read when Valgrind starts up. The build mechanism selects default suppressions which give reasonable

behaviour for the OS and libraries detected on your machine. To make it easier to write suppressions, you can use the

--gen-suppressions=yes option. This tells Valgrind to print out a suppression for each reported error, which

you can then copy into a suppressions file.

3

Using and understanding the Valgrind core

Different error-checking tools report different kinds of errors. The suppression mechanism therefore allows you to say

which tool or tool(s) each suppression applies to.

2.2. Getting started

First off, consider whether it might be beneficial to recompile your application and supporting libraries with debugging

info enabled (the -g option). Without debugging info, the best Valgrind tools will be able to do is guess which function

a particular piece of code belongs to, which makes both error messages and profiling output nearly useless. With -g,

you’ll get messages which point directly to the relevant source code lines.

Another option you might like to consider, if you are working with C++, is -fno-inline. That makes it easier to

see the function-call chain, which can help reduce confusion when navigating around large C++ apps. For example,

debugging OpenOffice.org with Memcheck is a bit easier when using this option. You don’t have to do this, but doing

so helps Valgrind produce more accurate and less confusing error reports. Chances are you’re set up like this already,

if you intended to debug your program with GNU GDB, or some other debugger. Alternatively, the Valgrind option

--read-inline-info=yes instructs Valgrind to read the debug information describing inlining information.

With this, function call chain will be properly shown, even when your application is compiled with inlining.

If you are planning to use Memcheck: On rare occasions, compiler optimisations (at -O2 and above, and sometimes

-O1) have been observed to generate code which fools Memcheck into wrongly reporting uninitialised value errors,

or missing uninitialised value errors. We have looked in detail into fixing this, and unfortunately the result is that

doing so would give a further significant slowdown in what is already a slow tool. So the best solution is to turn off

optimisation altogether. Since this often makes things unmanageably slow, a reasonable compromise is to use -O.

This gets you the majority of the benefits of higher optimisation levels whilst keeping relatively small the chances of

false positives or false negatives from Memcheck. Also, you should compile your code with -Wall because it can

identify some or all of the problems that Valgrind can miss at the higher optimisation levels. (Using -Wall is also a

good idea in general.) All other tools (as far as we know) are unaffected by optimisation level, and for profiling tools

like Cachegrind it is better to compile your program at its normal optimisation level.

Valgrind understands the DWARF2/3/4 formats used by GCC 3.1 and later. The reader for "stabs" debugging format

(used by GCC versions prior to 3.1) has been disabled in Valgrind 3.9.0.

When you’re ready to roll, run Valgrind as described above. Note that you should run the real (machine-code)

executable here. If your application is started by, for example, a shell or Perl script, you’ll need to modify it to

invoke Valgrind on the real executables. Running such scripts directly under Valgrind will result in you getting error

reports pertaining to /bin/sh,/usr/bin/perl, or whatever interpreter you’re using. This may not be what you

want and can be confusing. You can force the issue by giving the option --trace-children=yes, but confusion

is still likely.

2.3. The Commentary

Valgrind tools write a commentary, a stream of text, detailing error reports and other significant events. All lines in

the commentary have following form:

==12345== some-message-from-Valgrind

The 12345 is the process ID. This scheme makes it easy to distinguish program output from Valgrind commentary,

and also easy to differentiate commentaries from different processes which have become merged together, for whatever

reason.

4

Using and understanding the Valgrind core

By default, Valgrind tools write only essential messages to the commentary, so as to avoid flooding you with

information of secondary importance. If you want more information about what is happening, re-run, passing the -v

option to Valgrind. A second -v gives yet more detail.

You can direct the commentary to three different places:

1. The default: send it to a file descriptor, which is by default 2 (stderr). So, if you give the core no options, it will

write commentary to the standard error stream. If you want to send it to some other file descriptor, for example

number 9, you can specify --log-fd=9.

This is the simplest and most common arrangement, but can cause problems when Valgrinding entire trees of

processes which expect specific file descriptors, particularly stdin/stdout/stderr, to be available for their own use.

2. A less intrusive option is to write the commentary to a file, which you specify by --log-file=filename.

There are special format specifiers that can be used to use a process ID or an environment variable name in the log

file name. These are useful/necessary if your program invokes multiple processes (especially for MPI programs).

See the basic options section for more details.

3. The least intrusive option is to send the commentary to a network socket. The socket is specified as an IP address

and port number pair, like this: --log-socket=192.168.0.1:12345 if you want to send the output to host

IP 192.168.0.1 port 12345 (note: we have no idea if 12345 is a port of pre-existing significance). You can also omit

the port number: --log-socket=192.168.0.1, in which case a default port of 1500 is used. This default is

defined by the constant VG_CLO_DEFAULT_LOGPORT in the sources.

Note, unfortunately, that you have to use an IP address here, rather than a hostname.

Writing to a network socket is pointless if you don’t have something listening at the other end. We provide a simple

listener program, valgrind-listener, which accepts connections on the specified port and copies whatever

it is sent to stdout. Probably someone will tell us this is a horrible security risk. It seems likely that people will

write more sophisticated listeners in the fullness of time.

valgrind-listener can accept simultaneous connections from up to 50 Valgrinded processes. In front of

each line of output it prints the current number of active connections in round brackets.

valgrind-listener accepts three command-line options:

-e --exit-at-zero

When the number of connected processes falls back to zero, exit. Without this, it will run forever, that is, until you

send it Control-C.

--max-connect=INTEGER

By default, the listener can connect to up to 50 processes. Occasionally, that number is too small. Use this option

to provide a different limit. E.g. --max-connect=100.

5

Using and understanding the Valgrind core

portnumber

Changes the port it listens on from the default (1500). The specified port must be in the range 1024 to 65535. The

same restriction applies to port numbers specified by a --log-socket to Valgrind itself.

If a Valgrinded process fails to connect to a listener, for whatever reason (the listener isn’t running, invalid or

unreachable host or port, etc), Valgrind switches back to writing the commentary to stderr. The same goes for

any process which loses an established connection to a listener. In other words, killing the listener doesn’t kill the

processes sending data to it.

Here is an important point about the relationship between the commentary and profiling output from tools. The

commentary contains a mix of messages from the Valgrind core and the selected tool. If the tool reports errors, it will

report them to the commentary. However, if the tool does profiling, the profile data will be written to a file of some

kind, depending on the tool, and independent of what --log-*options are in force. The commentary is intended

to be a low-bandwidth, human-readable channel. Profiling data, on the other hand, is usually voluminous and not

meaningful without further processing, which is why we have chosen this arrangement.

2.4. Reporting of errors

When an error-checking tool detects something bad happening in the program, an error message is written to the

commentary. Here’s an example from Memcheck:

==25832== Invalid read of size 4

==25832== at 0x8048724: BandMatrix::ReSize(int, int, int) (bogon.cpp:45)

==25832== by 0x80487AF: main (bogon.cpp:66)

==25832== Address 0xBFFFF74C is not stack’d, malloc’d or free’d

This message says that the program did an illegal 4-byte read of address 0xBFFFF74C, which, as far as Memcheck

can tell, is not a valid stack address, nor corresponds to any current heap blocks or recently freed heap blocks. The

read is happening at line 45 of bogon.cpp, called from line 66 of the same file, etc. For errors associated with

an identified (current or freed) heap block, for example reading freed memory, Valgrind reports not only the location

where the error happened, but also where the associated heap block was allocated/freed.

Valgrind remembers all error reports. When an error is detected, it is compared against old reports, to see if it is a

duplicate. If so, the error is noted, but no further commentary is emitted. This avoids you being swamped with

bazillions of duplicate error reports.

If you want to know how many times each error occurred, run with the -v option. When execution finishes, all the

reports are printed out, along with, and sorted by, their occurrence counts. This makes it easy to see which errors have

occurred most frequently.

Errors are reported before the associated operation actually happens. For example, if you’re using Memcheck and

your program attempts to read from address zero, Memcheck will emit a message to this effect, and your program will

then likely die with a segmentation fault.

In general, you should try and fix errors in the order that they are reported. Not doing so can be confusing. For

example, a program which copies uninitialised values to several memory locations, and later uses them, will generate

several error messages, when run on Memcheck. The first such error message may well give the most direct clue to

the root cause of the problem.

The process of detecting duplicate errors is quite an expensive one and can become a significant performance overhead

if your program generates huge quantities of errors. To avoid serious problems, Valgrind will simply stop collecting

errors after 1,000 different errors have been seen, or 10,000,000 errors in total have been seen. In this situation you

might as well stop your program and fix it, because Valgrind won’t tell you anything else useful after this. Note that

6

Using and understanding the Valgrind core

the 1,000/10,000,000 limits apply after suppressed errors are removed. These limits are defined in m_errormgr.c

and can be increased if necessary.

To avoid this cutoff you can use the --error-limit=no option. Then Valgrind will always show errors, regardless

of how many there are. Use this option carefully, since it may have a bad effect on performance.

2.5. Suppressing errors

The error-checking tools detect numerous problems in the system libraries, such as the C library, which come pre-

installed with your OS. You can’t easily fix these, but you don’t want to see these errors (and yes, there are many!)

So Valgrind reads a list of errors to suppress at startup. A default suppression file is created by the ./configure

script when the system is built.

You can modify and add to the suppressions file at your leisure, or, better, write your own. Multiple suppression files

are allowed. This is useful if part of your project contains errors you can’t or don’t want to fix, yet you don’t want to

continuously be reminded of them.

Note: By far the easiest way to add suppressions is to use the --gen-suppressions=yes option described in

Core Command-line Options. This generates suppressions automatically. For best results, though, you may want to

edit the output of --gen-suppressions=yes by hand, in which case it would be advisable to read through this

section.

Each error to be suppressed is described very specifically, to minimise the possibility that a suppression-directive

inadvertently suppresses a bunch of similar errors which you did want to see. The suppression mechanism is designed

to allow precise yet flexible specification of errors to suppress.

If you use the -v option, at the end of execution, Valgrind prints out one line for each used suppression, giving the

number of times it got used, its name and the filename and line number where the suppression is defined. Depending

on the suppression kind, the filename and line number are optionally followed by additional information (such as the

number of blocks and bytes suppressed by a Memcheck leak suppression). Here’s the suppressions used by a run of

valgrind -v --tool=memcheck ls -l:

--1610-- used_suppression: 2 dl-hack3-cond-1 /usr/lib/valgrind/default.supp:1234

--1610-- used_suppression: 2 glibc-2.5.x-on-SUSE-10.2-(PPC)-2a /usr/lib/valgrind/default.supp:1234

Multiple suppressions files are allowed. Valgrind loads suppression patterns from $PREFIX/lib/valgrind/default.supp

unless --default-suppressions=no has been specified. You can ask to add suppressions from additional

files by specifying --suppressions=/path/to/file.supp one or more times.

If you want to understand more about suppressions, look at an existing suppressions file whilst reading the following

documentation. The file glibc-2.3.supp, in the source distribution, provides some good examples.

Each suppression has the following components:

• First line: its name. This merely gives a handy name to the suppression, by which it is referred to in the summary

of used suppressions printed out when a program finishes. It’s not important what the name is; any identifying

string will do.

7

Using and understanding the Valgrind core

• Second line: name of the tool(s) that the suppression is for (if more than one, comma-separated), and the name of

the suppression itself, separated by a colon (n.b.: no spaces are allowed), eg:

tool_name1,tool_name2:suppression_name

Recall that Valgrind is a modular system, in which different instrumentation tools can observe your program whilst it

is running. Since different tools detect different kinds of errors, it is necessary to say which tool(s) the suppression

is meaningful to.

Tools will complain, at startup, if a tool does not understand any suppression directed to it. Tools ignore

suppressions which are not directed to them. As a result, it is quite practical to put suppressions for all tools

into the same suppression file.

• Next line: a small number of suppression types have extra information after the second line (eg. the Param

suppression for Memcheck)

• Remaining lines: This is the calling context for the error -- the chain of function calls that led to it. There can be

up to 24 of these lines.

Locations may be names of either shared objects, functions, or source lines. They begin with obj:,fun:, or

src: respectively. Function, object, and file names to match against may use the wildcard characters *and ?.

Source lines are specified using the form filename[:lineNumber].

Important note: C++ function names must be mangled. If you are writing suppressions by hand, use the

--demangle=no option to get the mangled names in your error messages. An example of a mangled C++ name

is _ZN9QListView4showEv. This is the form that the GNU C++ compiler uses internally, and the form that

must be used in suppression files. The equivalent demangled name, QListView::show(), is what you see at

the C++ source code level.

A location line may also be simply "..." (three dots). This is a frame-level wildcard, which matches zero or more

frames. Frame level wildcards are useful because they make it easy to ignore varying numbers of uninteresting

frames in between frames of interest. That is often important when writing suppressions which are intended to be

robust against variations in the amount of function inlining done by compilers.

• Finally, the entire suppression must be between curly braces. Each brace must be the first character on its own line.

A suppression only suppresses an error when the error matches all the details in the suppression. Here’s an example:

{

__gconv_transform_ascii_internal/__mbrtowc/mbtowc

Memcheck:Value4

fun:__gconv_transform_ascii_internal

fun:__mbr*toc

fun:mbtowc

}

What it means is: for Memcheck only, suppress a use-of-uninitialised-value error, when the data size

is 4, when it occurs in the function __gconv_transform_ascii_internal, when that is called

from any function of name matching __mbr*toc, when that is called from mbtowc. It doesn’t ap-

ply under any other circumstances. The string by which this suppression is identified to the user is

__gconv_transform_ascii_internal/__mbrtowc/mbtowc.

(See Writing suppression files for more details on the specifics of Memcheck’s suppression kinds.)

8

Using and understanding the Valgrind core

Another example, again for the Memcheck tool:

{

libX11.so.6.2/libX11.so.6.2/libXaw.so.7.0

Memcheck:Value4

obj:/usr/X11R6/lib/libX11.so.6.2

obj:/usr/X11R6/lib/libX11.so.6.2

obj:/usr/X11R6/lib/libXaw.so.7.0

}

This suppresses any size 4 uninitialised-value error which occurs anywhere in libX11.so.6.2, when called from

anywhere in the same library, when called from anywhere in libXaw.so.7.0. The inexact specification of

locations is regrettable, but is about all you can hope for, given that the X11 libraries shipped on the Linux distro on

which this example was made have had their symbol tables removed.

An example of the src: specification, again for the Memcheck tool:

{

libX11.so.6.2/libX11.so.6.2/libXaw.so.7.0

Memcheck:Value4

src:valid.c:321

}

This suppresses any size-4 uninitialised-value error which occurs at line 321 in valid.c.

Although the above two examples do not make this clear, you can freely mix obj:,fun:, and src: lines in a

suppression.

Finally, here’s an example using three frame-level wildcards:

{

a-contrived-example

Memcheck:Leak

fun:malloc

...

fun:ddd

...

fun:ccc

...

fun:main

}

This suppresses Memcheck memory-leak errors, in the case where the allocation was done by main calling (though

any number of intermediaries, including zero) ccc, calling onwards via ddd and eventually to malloc..

2.6. Core Command-line Options

As mentioned above, Valgrind’s core accepts a common set of options. The tools also accept tool-specific options,

which are documented separately for each tool.

9

Using and understanding the Valgrind core

Valgrind’s default settings succeed in giving reasonable behaviour in most cases. We group the available options by

rough categories.

2.6.1. Tool-selection Option

The single most important option.

--tool=<toolname> [default: memcheck]

Run the Valgrind tool called toolname, e.g. memcheck, cachegrind, callgrind, helgrind, drd, massif, lackey, none,

exp-sgcheck, exp-bbv, exp-dhat, etc.

2.6.2. Basic Options

These options work with all tools.

-h --help

Show help for all options, both for the core and for the selected tool. If the option is repeated it is equivalent to giving

--help-debug.

--help-debug

Same as --help, but also lists debugging options which usually are only of use to Valgrind’s developers.

--version

Show the version number of the Valgrind core. Tools can have their own version numbers. There is a scheme in place

to ensure that tools only execute when the core version is one they are known to work with. This was done to minimise

the chances of strange problems arising from tool-vs-core version incompatibilities.

-q,--quiet

Run silently, and only print error messages. Useful if you are running regression tests or have some other automated

test machinery.

-v,--verbose

Be more verbose. Gives extra information on various aspects of your program, such as: the shared objects loaded, the

suppressions used, the progress of the instrumentation and execution engines, and warnings about unusual behaviour.

Repeating the option increases the verbosity level.

--trace-children=<yes|no> [default: no]

When enabled, Valgrind will trace into sub-processes initiated via the exec system call. This is necessary for

multi-process programs.

Note that Valgrind does trace into the child of a fork (it would be difficult not to, since fork makes an identical

copy of a process), so this option is arguably badly named. However, most children of fork calls immediately call

exec anyway.

10

Using and understanding the Valgrind core

--trace-children-skip=patt1,patt2,...

This option only has an effect when --trace-children=yes is specified. It allows for some children to be

skipped. The option takes a comma separated list of patterns for the names of child executables that Valgrind should

not trace into. Patterns may include the metacharacters ?and *, which have the usual meaning.

This can be useful for pruning uninteresting branches from a tree of processes being run on Valgrind. But you should

be careful when using it. When Valgrind skips tracing into an executable, it doesn’t just skip tracing that executable,

it also skips tracing any of that executable’s child processes. In other words, the flag doesn’t merely cause tracing to

stop at the specified executables -- it skips tracing of entire process subtrees rooted at any of the specified executables.

--trace-children-skip-by-arg=patt1,patt2,...

This is the same as --trace-children-skip, with one difference: the decision as to whether to trace into a

child process is made by examining the arguments to the child process, rather than the name of its executable.

--child-silent-after-fork=<yes|no> [default: no]

When enabled, Valgrind will not show any debugging or logging output for the child process resulting from a fork

call. This can make the output less confusing (although more misleading) when dealing with processes that create

children. It is particularly useful in conjunction with --trace-children=. Use of this option is also strongly

recommended if you are requesting XML output (--xml=yes), since otherwise the XML from child and parent may

become mixed up, which usually makes it useless.

--vgdb=<no|yes|full> [default: yes]

Valgrind will provide "gdbserver" functionality when --vgdb=yes or --vgdb=full is specified. This allows

an external GNU GDB debugger to control and debug your program when it runs on Valgrind. --vgdb=full

incurs significant performance overheads, but provides more precise breakpoints and watchpoints. See Debugging

your program using Valgrind’s gdbserver and GDB for a detailed description.

If the embedded gdbserver is enabled but no gdb is currently being used, the vgdb command line utility can send

"monitor commands" to Valgrind from a shell. The Valgrind core provides a set of Valgrind monitor commands. A

tool can optionally provide tool specific monitor commands, which are documented in the tool specific chapter.

--vgdb-error=<number> [default: 999999999]

Use this option when the Valgrind gdbserver is enabled with --vgdb=yes or --vgdb=full. Tools that report

errors will wait for "number" errors to be reported before freezing the program and waiting for you to connect with

GDB. It follows that a value of zero will cause the gdbserver to be started before your program is executed. This is

typically used to insert GDB breakpoints before execution, and also works with tools that do not report errors, such as

Massif.

11

Using and understanding the Valgrind core

--vgdb-stop-at=<set> [default: none]

Use this option when the Valgrind gdbserver is enabled with --vgdb=yes or --vgdb=full. The Valgrind

gdbserver will be invoked for each error after --vgdb-error have been reported. You can additionally ask the

Valgrind gdbserver to be invoked for other events, specified in one of the following ways:

• a comma separated list of one or more of startup exit valgrindabexit.

The values startup exit valgrindabexit respectively indicate to invoke gdbserver before your program is

executed, after the last instruction of your program, on Valgrind abnormal exit (e.g. internal error, out of memory,

...).

Note: startup and --vgdb-error=0 will both cause Valgrind gdbserver to be invoked before your program

is executed. The --vgdb-error=0 will in addition cause your program to stop on all subsequent errors.

•all to specify the complete set. It is equivalent to --vgdb-stop-at=startup,exit,valgrindabexit.

•none for the empty set.

--track-fds=<yes|no> [default: no]

When enabled, Valgrind will print out a list of open file descriptors on exit or on request, via the gdbserver monitor

command v.info open_fds. Along with each file descriptor is printed a stack backtrace of where the file was

opened and any details relating to the file descriptor such as the file name or socket details.

--time-stamp=<yes|no> [default: no]

When enabled, each message is preceded with an indication of the elapsed wallclock time since startup, expressed as

days, hours, minutes, seconds and milliseconds.

--log-fd=<number> [default: 2, stderr]

Specifies that Valgrind should send all of its messages to the specified file descriptor. The default, 2, is the standard

error channel (stderr). Note that this may interfere with the client’s own use of stderr, as Valgrind’s output will be

interleaved with any output that the client sends to stderr.

12

Using and understanding the Valgrind core

--log-file=<filename>

Specifies that Valgrind should send all of its messages to the specified file. If the file name is empty, it causes an

abort. There are three special format specifiers that can be used in the file name.

%p is replaced with the current process ID. This is very useful for program that invoke multiple processes. WARNING:

If you use --trace-children=yes and your program invokes multiple processes OR your program forks without

calling exec afterwards, and you don’t use this specifier (or the %q specifier below), the Valgrind output from all those

processes will go into one file, possibly jumbled up, and possibly incomplete. Note: If the program forks and calls

exec afterwards, Valgrind output of the child from the period between fork and exec will be lost. Fortunately this gap

is really tiny for most programs; and modern programs use posix_spawn anyway.

%n is replaced with a file sequence number unique for this process. This is useful for processes that produces several

files from the same filename template.

%q{FOO} is replaced with the contents of the environment variable FOO. If the {FOO} part is malformed, it causes an

abort. This specifier is rarely needed, but very useful in certain circumstances (eg. when running MPI programs). The

idea is that you specify a variable which will be set differently for each process in the job, for example BPROC_RANK

or whatever is applicable in your MPI setup. If the named environment variable is not set, it causes an abort. Note

that in some shells, the {and }characters may need to be escaped with a backslash.

%% is replaced with %.

If an %is followed by any other character, it causes an abort.

If the file name specifies a relative file name, it is put in the program’s initial working directory: this is the current

directory when the program started its execution after the fork or after the exec. If it specifies an absolute file name

(ie. starts with ’/’) then it is put there.

--log-socket=<ip-address:port-number>

Specifies that Valgrind should send all of its messages to the specified port at the specified IP address. The port

may be omitted, in which case port 1500 is used. If a connection cannot be made to the specified socket, Valgrind

falls back to writing output to the standard error (stderr). This option is intended to be used in conjunction with the

valgrind-listener program. For further details, see the commentary in the manual.

2.6.3. Error-related Options

These options are used by all tools that can report errors, e.g. Memcheck, but not Cachegrind.

13

Using and understanding the Valgrind core

--xml=<yes|no> [default: no]

When enabled, the important parts of the output (e.g. tool error messages) will be in XML format rather than plain

text. Furthermore, the XML output will be sent to a different output channel than the plain text output. Therefore,

you also must use one of --xml-fd,--xml-file or --xml-socket to specify where the XML is to be sent.

Less important messages will still be printed in plain text, but because the XML output and plain text output are sent

to different output channels (the destination of the plain text output is still controlled by --log-fd,--log-file

and --log-socket) this should not cause problems.

This option is aimed at making life easier for tools that consume Valgrind’s output as input, such as GUI front ends.

Currently this option works with Memcheck, Helgrind, DRD and SGcheck. The output format is specified in the file

docs/internals/xml-output-protocol4.txt in the source tree for Valgrind 3.5.0 or later.

The recommended options for a GUI to pass, when requesting XML output, are: --xml=yes to enable XML output,

--xml-file to send the XML output to a (presumably GUI-selected) file, --log-file to send the plain text

output to a second GUI-selected file, --child-silent-after-fork=yes, and -q to restrict the plain text

output to critical error messages created by Valgrind itself. For example, failure to read a specified suppressions file

counts as a critical error message. In this way, for a successful run the text output file will be empty. But if it isn’t

empty, then it will contain important information which the GUI user should be made aware of.

--xml-fd=<number> [default: -1, disabled]

Specifies that Valgrind should send its XML output to the specified file descriptor. It must be used in conjunction

with --xml=yes.

--xml-file=<filename>

Specifies that Valgrind should send its XML output to the specified file. It must be used in conjunction with

--xml=yes. Any %p or %q sequences appearing in the filename are expanded in exactly the same way as they

are for --log-file. See the description of --log-file for details.

--xml-socket=<ip-address:port-number>

Specifies that Valgrind should send its XML output the specified port at the specified IP address. It must be used in

conjunction with --xml=yes. The form of the argument is the same as that used by --log-socket. See the

description of --log-socket for further details.

--xml-user-comment=<string>

Embeds an extra user comment string at the start of the XML output. Only works when --xml=yes is specified;

ignored otherwise.

--demangle=<yes|no> [default: yes]

Enable/disable automatic demangling (decoding) of C++ names. Enabled by default. When enabled, Valgrind will

attempt to translate encoded C++ names back to something approaching the original. The demangler handles symbols

mangled by g++ versions 2.X, 3.X and 4.X.

An important fact about demangling is that function names mentioned in suppressions files should be in their mangled

form. Valgrind does not demangle function names when searching for applicable suppressions, because to do otherwise

would make suppression file contents dependent on the state of Valgrind’s demangling machinery, and also slow down

suppression matching.

14

Using and understanding the Valgrind core

--num-callers=<number> [default: 12]

Specifies the maximum number of entries shown in stack traces that identify program locations. Note that errors

are commoned up using only the top four function locations (the place in the current function, and that of its three

immediate callers). So this doesn’t affect the total number of errors reported.

The maximum value for this is 500. Note that higher settings will make Valgrind run a bit more slowly and take a bit

more memory, but can be useful when working with programs with deeply-nested call chains.

--unw-stack-scan-thresh=<number> [default: 0] ,--unw-stack-scan-frames=<number>

[default: 5]

Stack-scanning support is available only on ARM targets.

These flags enable and control stack unwinding by stack scanning. When the normal stack unwinding mechanisms --

usage of Dwarf CFI records, and frame-pointer following -- fail, stack scanning may be able to recover a stack trace.

Note that stack scanning is an imprecise, heuristic mechanism that may give very misleading results, or none at all.

It should be used only in emergencies, when normal unwinding fails, and it is important to nevertheless have stack

traces.

Stack scanning is a simple technique: the unwinder reads words from the stack, and tries to guess which of them might

be return addresses, by checking to see if they point just after ARM or Thumb call instructions. If so, the word is

added to the backtrace.

The main danger occurs when a function call returns, leaving its return address exposed, and a new function is called,

but the new function does not overwrite the old address. The result of this is that the backtrace may contain entries for

functions which have already returned, and so be very confusing.

A second limitation of this implementation is that it will scan only the page (4KB, normally) containing the starting

stack pointer. If the stack frames are large, this may result in only a few (or not even any) being present in the trace.

Also, if you are unlucky and have an initial stack pointer near the end of its containing page, the scan may miss all

interesting frames.

By default stack scanning is disabled. The normal use case is to ask for it when a stack trace would otherwise be very

short. So, to enable it, use --unw-stack-scan-thresh=number. This requests Valgrind to try using stack

scanning to "extend" stack traces which contain fewer than number frames.

If stack scanning does take place, it will only generate at most the number of frames specified by

--unw-stack-scan-frames. Typically, stack scanning generates so many garbage entries that this value

is set to a low value (5) by default. In no case will a stack trace larger than the value specified by --num-callers

be created.

--error-limit=<yes|no> [default: yes]

When enabled, Valgrind stops reporting errors after 10,000,000 in total, or 1,000 different ones, have been seen. This

is to stop the error tracking machinery from becoming a huge performance overhead in programs with many errors.

--error-exitcode=<number> [default: 0]

Specifies an alternative exit code to return if Valgrind reported any errors in the run. When set to the default value

(zero), the return value from Valgrind will always be the return value of the process being simulated. When set to a

nonzero value, that value is returned instead, if Valgrind detects any errors. This is useful for using Valgrind as part

of an automated test suite, since it makes it easy to detect test cases for which Valgrind has reported errors, just by

inspecting return codes.

15

Using and understanding the Valgrind core

--exit-on-first-error=<yes|no> [default: no]

If this option is enabled, Valgrind exits on the first error. A nonzero exit value must be defined using

--error-exitcode option. Useful if you are running regression tests or have some other automated test

machinery.

--error-markers=<begin>,<end> [default: none]

When errors are output as plain text (i.e. XML not used), --error-markers instructs to output a line containing

the begin (end) string before (after) each error.

Such marker lines facilitate searching for errors and/or extracting errors in an output file that contain valgrind errors

mixed with the program output.

Note that empty markers are accepted. So, only using a begin (or an end) marker is possible.

--sigill-diagnostics=<yes|no> [default: yes]

Enable/disable printing of illegal instruction diagnostics. Enabled by default, but defaults to disabled when --quiet

is given. The default can always be explicitly overridden by giving this option.

When enabled, a warning message will be printed, along with some diagnostics, whenever an instruction is encoun-

tered that Valgrind cannot decode or translate, before the program is given a SIGILL signal. Often an illegal instruction

indicates a bug in the program or missing support for the particular instruction in Valgrind. But some programs do

deliberately try to execute an instruction that might be missing and trap the SIGILL signal to detect processor features.

Using this flag makes it possible to avoid the diagnostic output that you would otherwise get in such cases.

--keep-debuginfo=<yes|no> [default: no]

When enabled, keep ("archive") symbols and all other debuginfo for unloaded code. This allows saved stack traces to

include file/line info for code that has been dlclose’d (or similar). Be careful with this, since it can lead to unbounded

memory use for programs which repeatedly load and unload shared objects.

Some tools and some functionalities have only limited support for archived debug info. Memcheck fully supports

it. Generally, tools that report errors can use archived debug info to show the error stack traces. The known

limitations are: Helgrind’s past access stack trace of a race condition is does not use archived debug info. Massif (and

more generally the xtree Massif output format) does not make use of archived debug info. Only Memcheck has been

(somewhat) tested with --keep-debuginfo=yes, so other tools may have unknown limitations.

--show-below-main=<yes|no> [default: no]

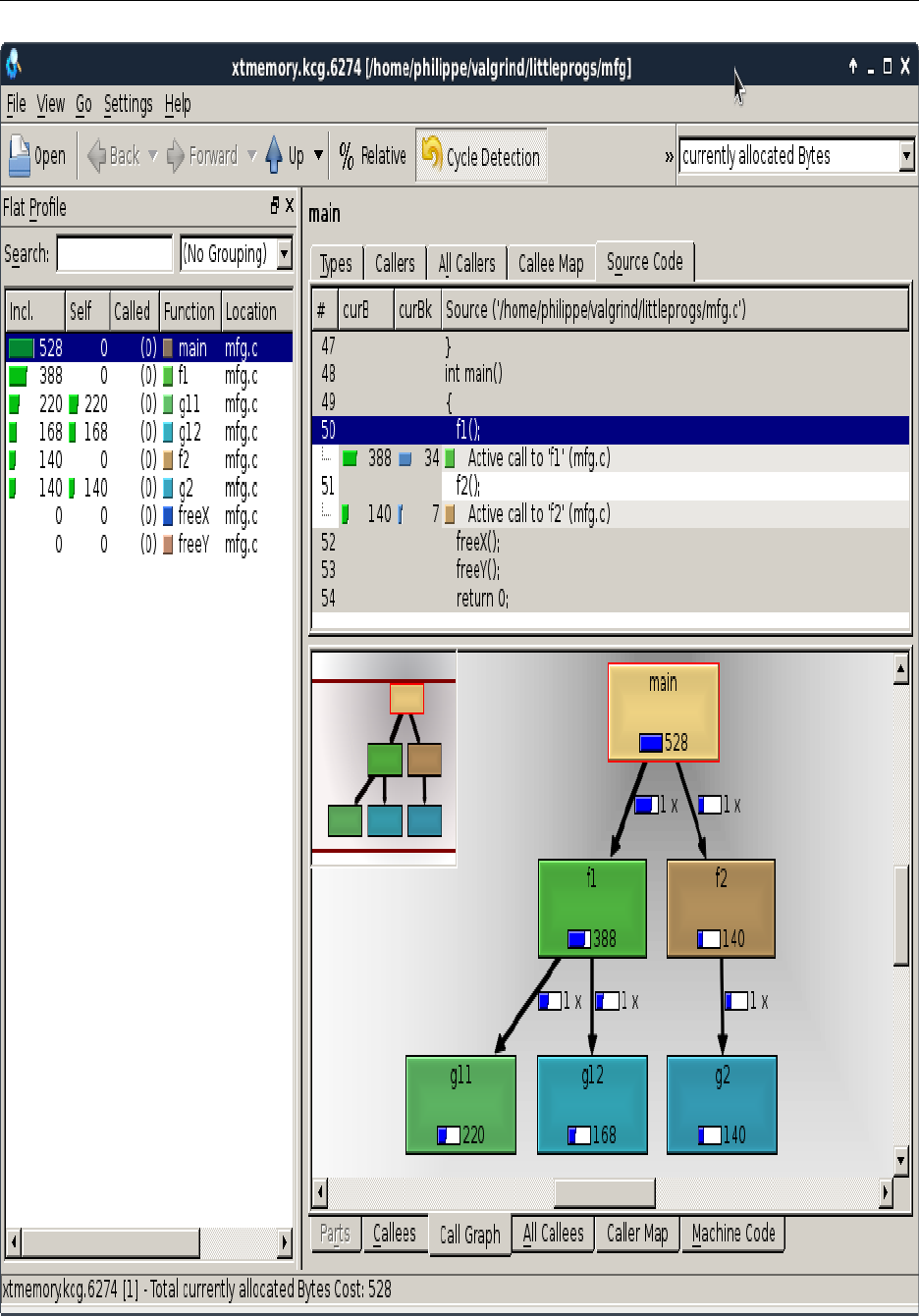

By default, stack traces for errors do not show any functions that appear beneath main because most of the time it’s