Voice API For Windows Operating Systems Programming Guide 6.2 Win V1

User Manual: 6.2

Open the PDF directly: View PDF ![]() .

.

Page Count: 190 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents

- Figures

- Tables

- About This Publication

- 1. Product Description

- 2. Programming Models

- 3. Device Handling

- 4. Event Handling

- 5. Error Handling

- 6. Application Development Guidelines

- 7. Call Progress Analysis

- 7.1 Call Progress Analysis Overview

- 7.2 Call Progress and Call Analysis Terminology

- 7.3 Call Progress Analysis Components

- 7.4 Using Call Progress Analysis

- 7.5 R4 on DM3 Call Progress Analysis Scenarios

- 7.6 Tone Detection in Call Progress Analysis

- 7.7 PBX Expert Tone Set Files and Call Progress Analysis

- 7.8 Default Tone Definitions

- 7.9 Modifying Default Tone Definitions

- 7.10 SIT Frequency Detection

- 7.10.1 Tri-Tone SIT Sequences

- 7.10.2 Setting Tri-Tone SIT Frequency Detection Parameters

- 7.10.3 Obtaining Tri-Tone SIT Frequency Information

- 7.10.4 Global Tone Detection Tone Memory Usage

- 7.10.5 Frequency Detection Errors

- 7.10.6 Setting Single Tone Frequency Detection Parameters

- 7.10.7 Obtaining Single Tone Frequency Information

- 7.11 Call Progress Analysis Errors

- 7.12 Cadence Detection in Basic Call Progress Analysis

- 8. Recording and Playback

- 9. Speed and Volume Control

- 10. Send and Receive FSK Data

- 11. Caller ID

- 12. Cached Prompt Management

- 13. Global Tone Detection and Generation, and Cadenced Tone Generation

- 13.1 Global Tone Detection (GTD)

- 13.1.1 Overview of Global Tone Detection

- 13.1.2 Defining Global Tone Detection Tones

- 13.1.3 Building Tone Templates

- 13.1.4 Working with Tone Templates

- 13.1.5 Retrieving Tone Events

- 13.1.6 Setting GTD Tones as Termination Conditions

- 13.1.7 Maximum Amount of Memory Available for User-Defined Tone Templates

- 13.1.8 Estimating Memory

- 13.1.9 Guidelines for Creating User-Defined Tones

- 13.1.10 Global Tone Detection Applications

- 13.2 Global Tone Generation (GTG)

- 13.3 Cadenced Tone Generation

- 13.3.1 Using Cadenced Tone Generation

- 13.3.2 How To Generate a Custom Cadenced Tone

- 13.3.3 How To Generate a Non-Cadenced Tone

- 13.3.4 TN_GENCAD Data Structure - Cadenced Tone Generation

- 13.3.5 How To Generate a Standard PBX Call Progress Signal

- 13.3.6 Predefined Set of Standard PBX Call Progress Signals

- 13.3.7 Important Considerations for Using Predefined Call Progress Signals

- 13.1 Global Tone Detection (GTD)

- 14. Global Dial Pulse Detection

- 15. R2/MF Signaling

- 16. Syntellect License Automated Attendant

- 17. Building Applications

- Glossary

- Index

Voice API for Windows Operating

Systems

Programming Guide

November 2002

05-1831-001

Voice API for Windows Operating Systems Programming Guide – November 2002

INFORMATION IN THIS DOCUMENT IS PROVIDED IN CONNECTION WITH INTEL® PRODUCTS. NO LICENSE, EXPRESS OR IMPLIED, BY

ESTOPPEL OR OTHERWISE, TO ANY INTELLECTUAL PROPERTY RIGHTS IS GRANTED BY THIS DOCUMENT. EXCEPT AS PROVIDED IN

INTEL'S TERMS AND CONDITIONS OF SALE FOR SUCH PRODUCTS, INTEL ASSUMES NO LIABILITY WHATSOEVER, AND INTEL DISCLAIMS

ANY EXPRESS OR IMPLIED WARRANTY, RELATING TO SALE AND/OR USE OF INTEL PRODUCTS INCLUDING LIABILITY OR WARRANTIES

RELATING TO FITNESS FOR A PARTICULAR PURPOSE, MERCHANTABILITY, OR INFRINGEMENT OF ANY PATENT, COPYRIGHT OR OTHER

INTELLECTUAL PROPERTY RIGHT. Intel products are not intended for use in medical, life saving, or life sustaining applications.

Intel may make changes to specifications and product descriptions at any time, without notice.

This Voice API for Windows Operating Systems Programming Guide as well as the software described in it is furnished under license and may only be

used or copied in accordance with the terms of the license. The information in this manual is furnished for informational use only, is subject to change

without notice, and should not be construed as a commitment by Intel Corporation. Intel Corporation assumes no responsibility or liability for any

errors or inaccuracies that may appear in this document or any software that may be provided in association with this document.

Except as permitted by such license, no part of this document may be reproduced, stored in a retrieval system, or transmitted in any form or by any

means without express written consent of Intel Corporation.

Copyright © 2002, Intel Corporation

AlertVIEW, AnyPoint, AppChoice, BoardWatch, BunnyPeople, CablePort, Celeron, Chips, CT Media, Dialogic, DM3, EtherExpress, ETOX, FlashFile,

i386, i486, i960, iCOMP, InstantIP, Intel, Intel logo, Intel386, Intel486, Intel740, IntelDX2, IntelDX4, IntelSX2, Intel Create&Share, Intel GigaBlade, Intel

InBusiness, Intel Inside, Intel Inside logo, Intel NetBurst, Intel NetMerge, Intel NetStructure, Intel Play, Intel Play logo, Intel SingleDriver, Intel

SpeedStep, Intel StrataFlash, Intel TeamStation, Intel Xeon, Intel XScale, IPLink, Itanium, LANDesk, LanRover, MCS, MMX, MMX logo, Optimizer

logo, OverDrive, Paragon, PC Dads, PC Parents, PDCharm, Pentium, Pentium II Xeon, Pentium III Xeon, Performance at Your Command,

RemoteExpress, Shiva, SmartDie, Solutions960, Sound Mark, StorageExpress, The Computer Inside., The Journey Inside, TokenExpress, Trillium,

VoiceBrick, Vtune, and Xircom are trademarks or registered trademarks of Intel Corporation or its subsidiaries in the United States and other

countries.

* Other names and brands may be claimed as the property of others.

Publication Date: November 2002

Document Number: 05-1831-001

Intel Converged Communications, Inc.

1515 Route 10

Parsippany, NJ 07054

For Technical Support, visit the Intel Telecom Support Resources website at:

http://developer.intel.com/design/telecom/support/

For Products and Services Information, visit the Intel Communications Systems Products website at:

http://www.intel.com/network/csp/

For Sales Offices and other contact information, visit the Intel Telecom Building Blocks Sales Offices page at:

http://www.intel.com/network/csp/sales/

Voice API for Windows Operating Systems Programming Guide – November 2002 3

Contents

About This Publication . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

Purpose . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

Intended Audience. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

How to Use This Publication . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

Related Information . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

1 Product Description . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

1.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

1.2 R4 API . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

1.3 Call Progress Analysis. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

1.4 Tone Generation and Detection Features . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

1.4.1 Global Tone Detection (GTD) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

1.4.2 Global Tone Generation (GTG) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

1.4.3 Cadenced Tone Generation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

1.5 Dial Pulse Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

1.6 Play and Record Features . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

1.6.1 Play and Record Functions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

1.6.2 Speed and Volume Control. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

1.6.3 Transaction Record . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

1.6.4 Silence Compressed Record . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

1.6.5 Echo Cancellation Resource . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

1.7 Send and Receive FSK Data. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

1.8 Caller ID. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

1.9 R2/MF Signaling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

1.10 TDM Bus Routing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2 Programming Models. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.1 Standard Runtime Library . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.2 Asynchronous Programming Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.3 Synchronous Programming Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

3 Device Handling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

3.1 Device Concepts . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

3.2 Voice Device Names . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

4 Event Handling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

4.1 Overview of Event Handling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

4.2 Event Management Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

5 Error Handling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

6 Application Development Guidelines . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

6.1 General Considerations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

6.1.1 Busy and Idle States . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

6.1.2 I/O Terminations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

6.1.3 Clearing Structures Before Use . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 34

4Voice API for Windows Operating Systems Programming Guide – November 2002

Contents

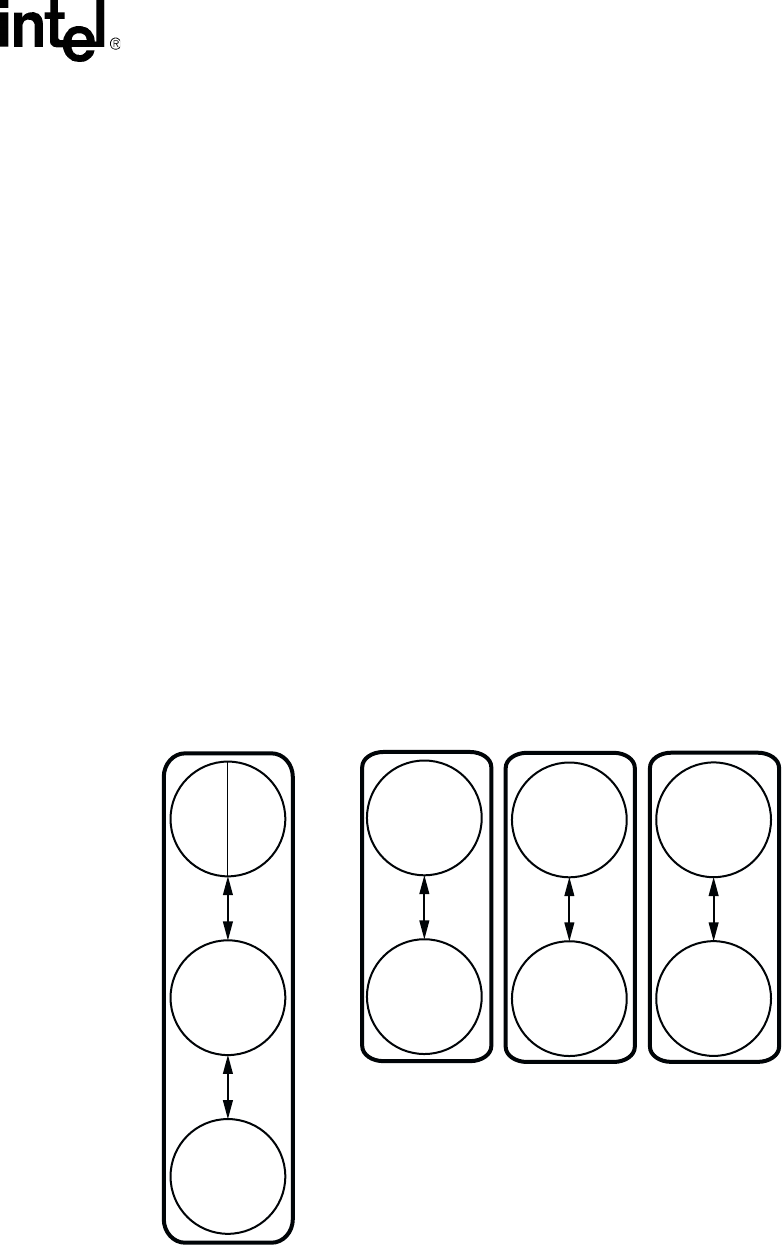

6.2 Fixed and Flexible Routing Configurations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 34

6.3 Fixed Routing Configuration Restrictions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 36

6.4 Additional DM3 Considerations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 36

6.4.1 Call Control Through Global Call API Library . . . . . . . . . . . . . . . . . . . . . . . . . . . . 37

6.4.2 Multi-Threading and Multi-Processing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 37

6.4.3 DM3 Interoperability. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 37

6.4.4 DM3 Media Loads . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 38

6.4.5 Device Discovery for DM3 and Springware . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 38

6.4.6 Device Initialization Hint. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 38

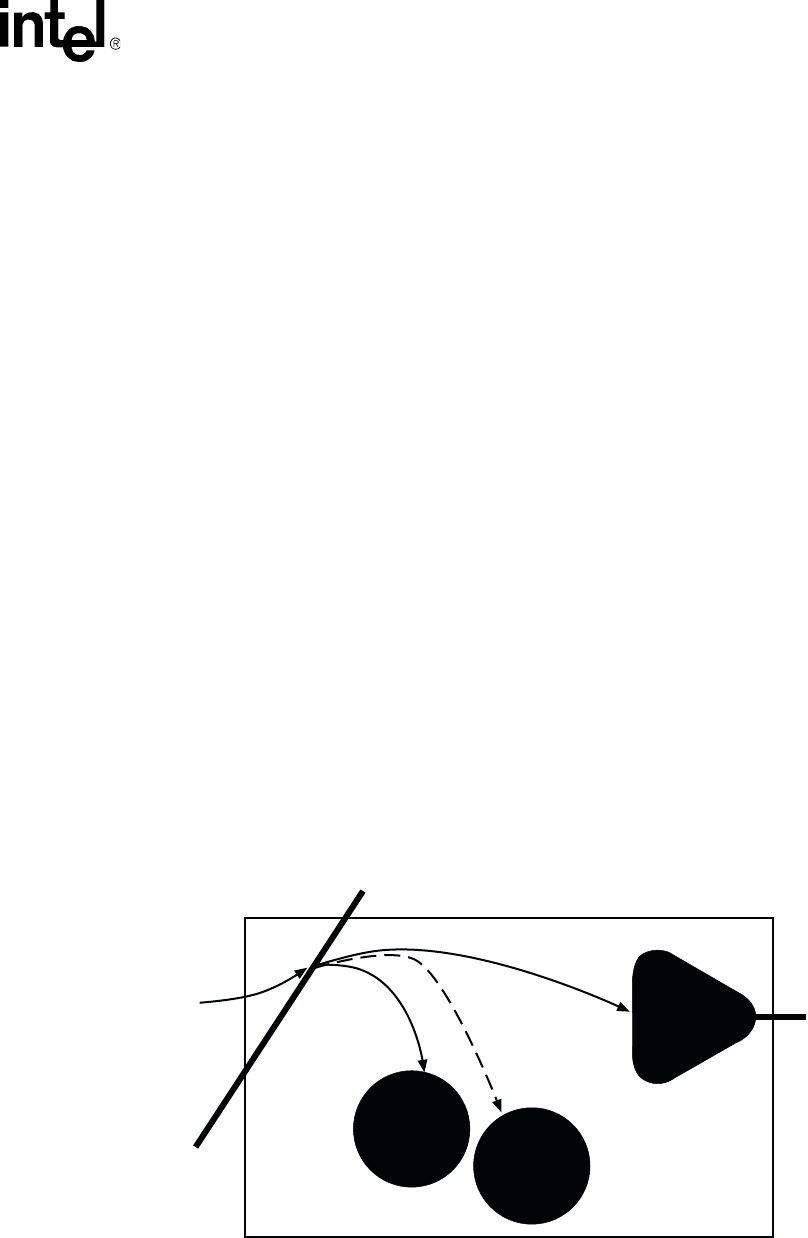

6.4.7 TDM Bus Time Slot Considerations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 39

6.5 Using Wink Signaling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .40

6.5.1 Setting Delay Prior to Wink . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 40

6.5.2 Setting Wink Duration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 41

6.5.3 Receiving an Inbound Wink . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 41

7 Call Progress Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

7.1 Call Progress Analysis Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

7.2 Call Progress and Call Analysis Terminology. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

7.3 Call Progress Analysis Components . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

7.4 Using Call Progress Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 45

7.4.1 Overview of Steps to Initiate Call Progress Analysis . . . . . . . . . . . . . . . . . . . . . . . 46

7.4.2 Enabling Call Progress Analysis Features in DX_CAP . . . . . . . . . . . . . . . . . . . . . 46

7.4.3 Enabling Call Progress Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 47

7.4.4 Executing a Dial Function . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 48

7.4.5 Determining the Outcome of a Call . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 48

7.4.6 Obtaining Additional Call Outcome Information. . . . . . . . . . . . . . . . . . . . . . . . . . . 49

7.5 R4 on DM3 Call Progress Analysis Scenarios . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51

7.6 Tone Detection in Call Progress Analysis. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51

7.6.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 52

7.6.2 Types of Tones . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 52

7.6.3 Dial Tone Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

7.6.4 Ringback Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 54

7.6.5 Busy Tone Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

7.6.6 Positive Voice Detection (PVD) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

7.6.7 Positive Answering Machine Detection (PAMD) . . . . . . . . . . . . . . . . . . . . . . . . . . 56

7.6.8 Fax or Modem Tone Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 57

7.6.9 Disconnect Tone Supervision . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 57

7.6.10 Loop Current Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 58

7.7 PBX Expert Tone Set Files and Call Progress Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . 59

7.8 Default Tone Definitions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .59

7.9 Modifying Default Tone Definitions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 60

7.10 SIT Frequency Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

7.10.1 Tri-Tone SIT Sequences . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

7.10.2 Setting Tri-Tone SIT Frequency Detection Parameters. . . . . . . . . . . . . . . . . . . . .62

7.10.3 Obtaining Tri-Tone SIT Frequency Information . . . . . . . . . . . . . . . . . . . . . . . . . . . 64

7.10.4 Global Tone Detection Tone Memory Usage . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

7.10.5 Frequency Detection Errors. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

7.10.6 Setting Single Tone Frequency Detection Parameters . . . . . . . . . . . . . . . . . . . . . 66

7.10.7 Obtaining Single Tone Frequency Information . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

7.11 Call Progress Analysis Errors . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 67

Voice API for Windows Operating Systems Programming Guide – November 2002 5

Contents

7.12 Cadence Detection in Basic Call Progress Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 67

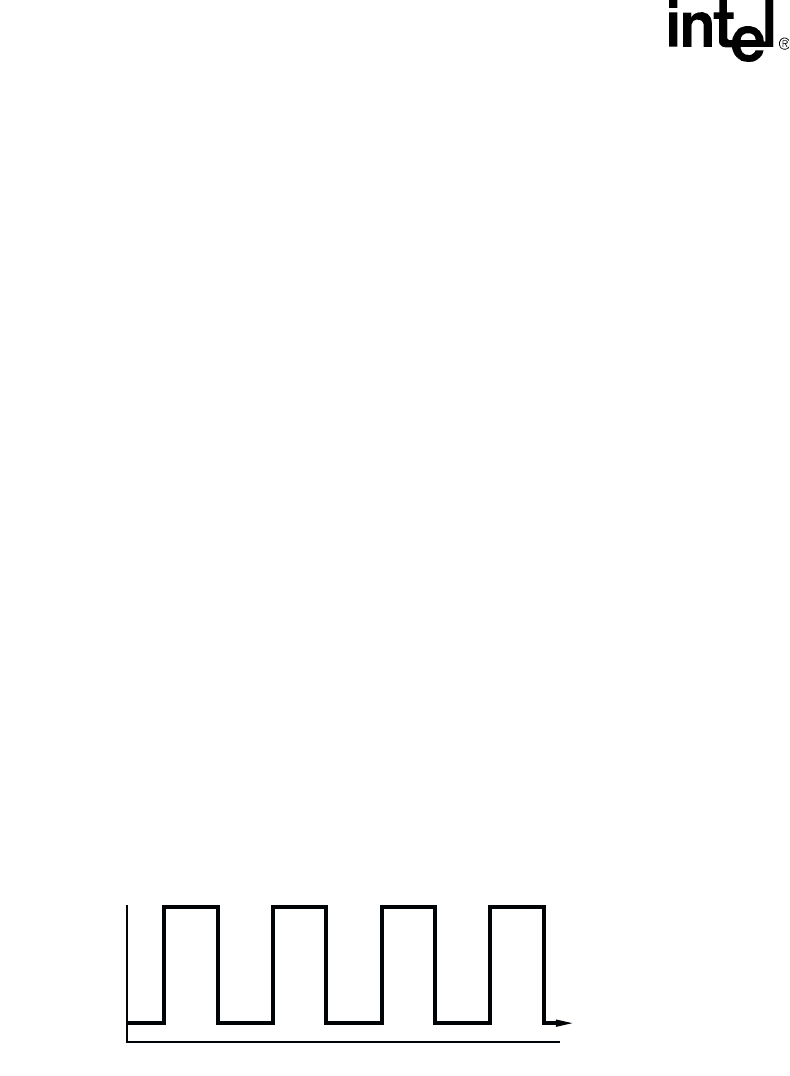

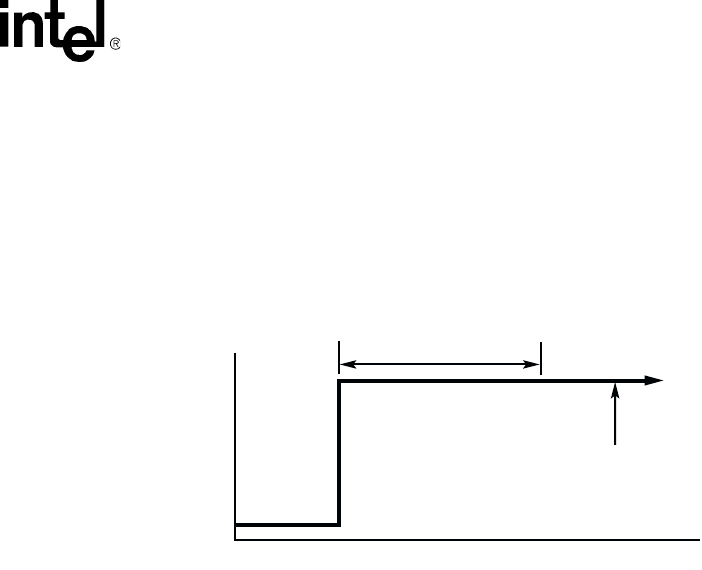

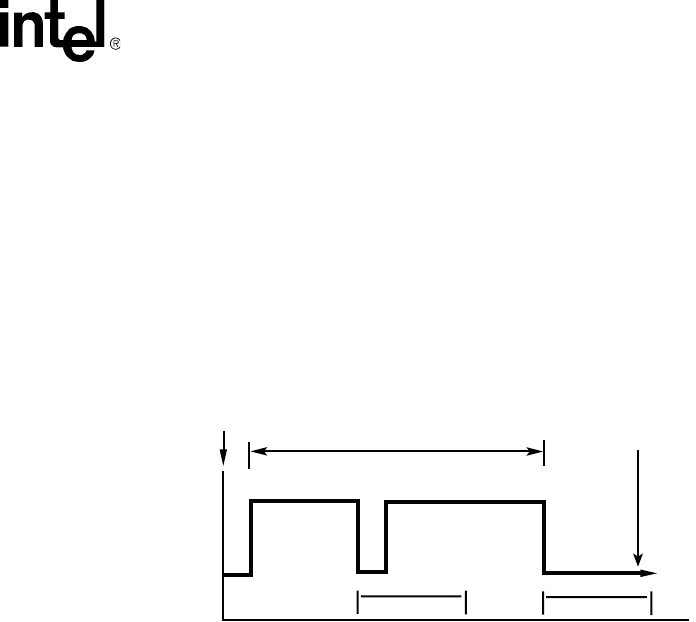

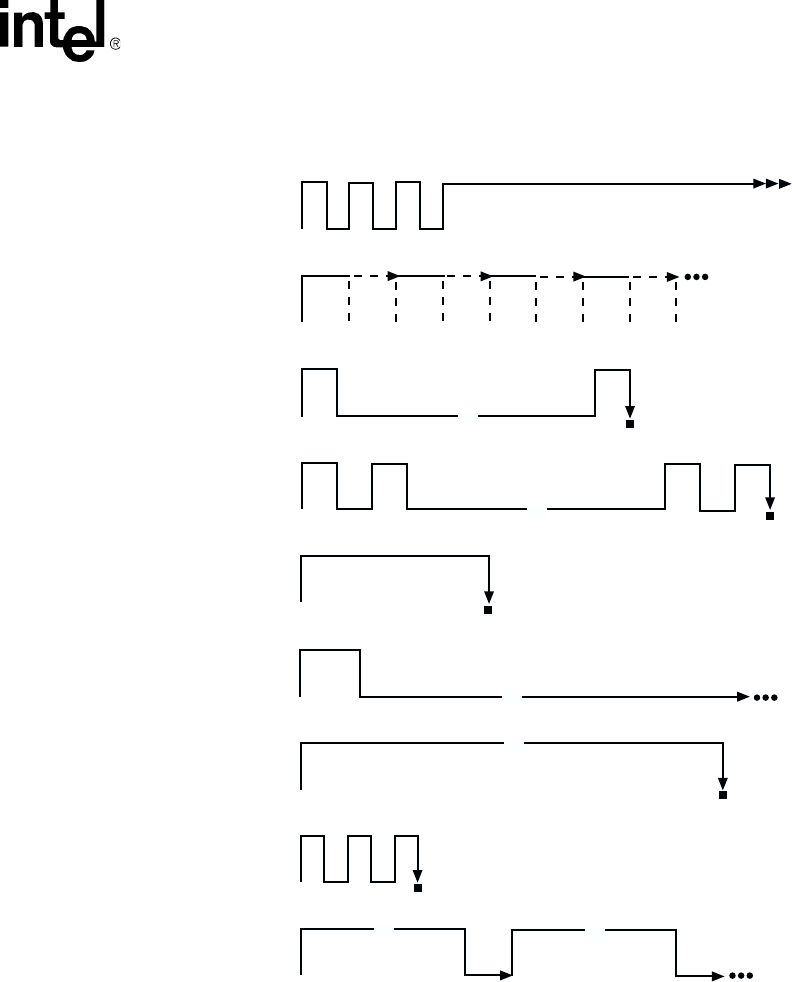

7.12.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

7.12.2 Typical Cadence Patterns. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

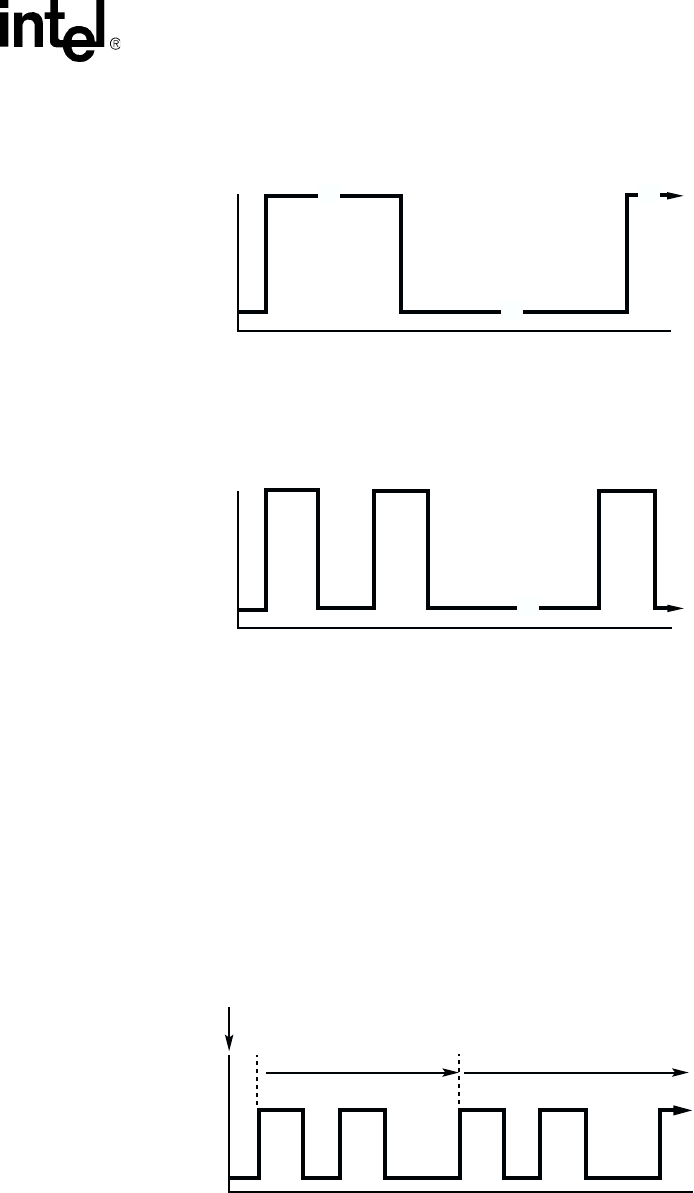

7.12.3 Elements of a Cadence . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 69

7.12.4 Outcomes of Cadence Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 70

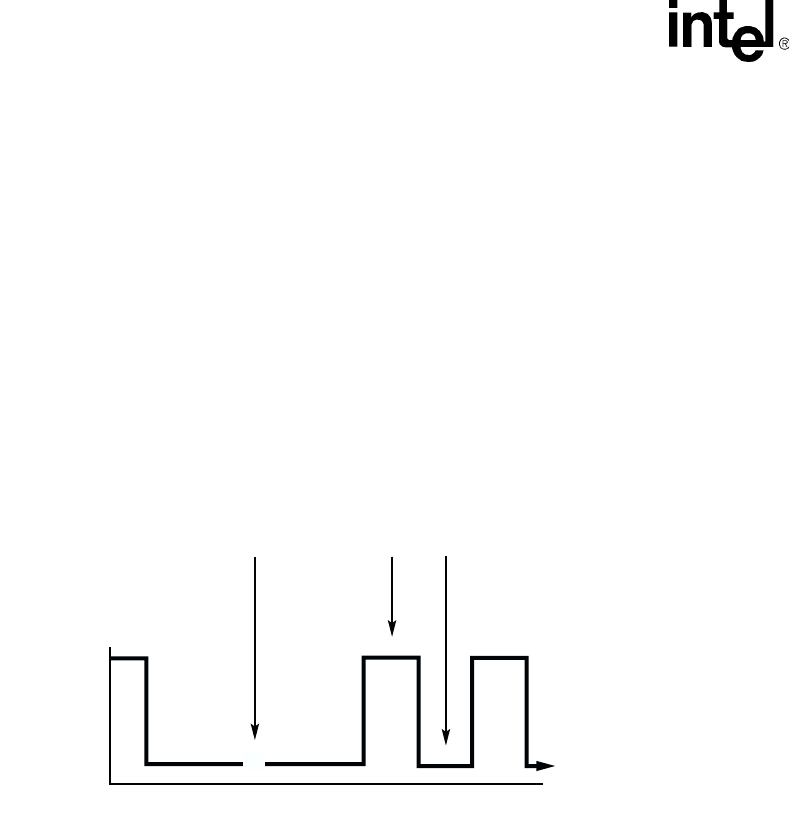

7.12.5 Setting Selected Cadence Detection Parameters. . . . . . . . . . . . . . . . . . . . . . . . . 71

7.12.6 Obtaining Cadence Information . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

8 Recording and Playback . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

8.1 Overview of Recording and Playback . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

8.2 Digital Recording and Playback. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

8.3 Play and Record Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 78

8.4 Play and Record Convenience Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 78

8.5 Voice Encoding Methods . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 79

8.6 G.726 Voice Coder . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 80

8.7 Transaction Record . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

8.8 Silence Compressed Record . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

8.8.1 Overview of Silence Compressed Record . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

8.8.2 Enabling Silence Compressed Record . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 82

8.8.3 Encoding Methods Supported in Silence Compressed Record . . . . . . . . . . . . . . 83

8.9 Echo Cancellation Resource . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 84

8.9.1 Overview of Echo Cancellation Resource. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 84

8.9.2 Echo Cancellation Resource Operation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 84

8.9.3 Modes of Operation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 86

8.9.4 Echo Cancellation Resource Application Models . . . . . . . . . . . . . . . . . . . . . . . . . 88

9 Speed and Volume Control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 97

9.1 Speed and Volume Control Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 97

9.2 Speed and Volume Convenience Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 97

9.3 Speed and Volume Adjustment Functions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 98

9.4 Speed and Volume Modification Tables . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 98

9.5 Play Adjustment Digits . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 102

9.6 Setting Play Adjustment Conditions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 102

9.7 Explicitly Adjusting Speed and Volume . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 102

10 Send and Receive FSK Data . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 105

10.1 Overview of ADSI and Two-Way FSK Support . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 105

10.2 ADSI Protocol . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 106

10.3 ADSI Operation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 107

10.4 One-Way ADSI . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 107

10.5 Two-Way ADSI . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 108

10.5.1 Transmit to On-Hook CPE . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 108

10.5.2 Two-Way FSK. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 108

10.6 ADSI and Two-Way FSK Voice Library Support . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 109

10.6.1 Support on DM3 Boards . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 109

10.6.2 Support on Springware Boards. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110

10.7 Developing ADSI Applications . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110

10.7.1 Technical Overview of One-Way ADSI Data Transfer . . . . . . . . . . . . . . . . . . . . 110

10.7.2 Implementing One-Way ADSI Using dx_TxIottData( ) . . . . . . . . . . . . . . . . . . . . 111

10.7.3 Technical Overview of Two-Way ADSI Data Transfer . . . . . . . . . . . . . . . . . . . . 112

10.7.4 Implementing Two-Way ADSI Using dx_TxIottData( ) . . . . . . . . . . . . . . . . . . . . 113

6Voice API for Windows Operating Systems Programming Guide – November 2002

Contents

10.7.5 Implementing Two-Way ADSI Using dx_TxRxIottData( ) . . . . . . . . . . . . . . . . . . 114

10.8 Modifying Older One-Way ADSI Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 115

11 Caller ID . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 117

11.1 Overview of Caller ID . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .117

11.2 Caller ID Formats . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 117

11.3 Accessing Caller ID Information . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 119

11.4 Enabling Channels to Use the Caller ID Feature . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 120

11.5 Error Handling. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 120

11.6 Caller ID Technical Specifications . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 120

12 Cached Prompt Management. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 123

12.1 Overview of Cached Prompt Management. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 123

12.2 Using Cached Prompt Management. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 123

12.2.1 Discovering Cached Prompt Capability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 123

12.2.2 Downloading Cached Prompts to a Board. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 124

12.2.3 Playing Cached Prompts . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 124

12.2.4 Recovering from Errors . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 124

12.2.5 Cached Prompt Management Hints and Tips . . . . . . . . . . . . . . . . . . . . . . . . . . . 125

12.3 Cached Prompt Management Example Code . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 125

13 Global Tone Detection and Generation, and Cadenced Tone Generation. . . . . . . . . . . . . . 129

13.1 Global Tone Detection (GTD). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 129

13.1.1 Overview of Global Tone Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 129

13.1.2 Defining Global Tone Detection Tones . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .130

13.1.3 Building Tone Templates . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 130

13.1.4 Working with Tone Templates . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 132

13.1.5 Retrieving Tone Events . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 132

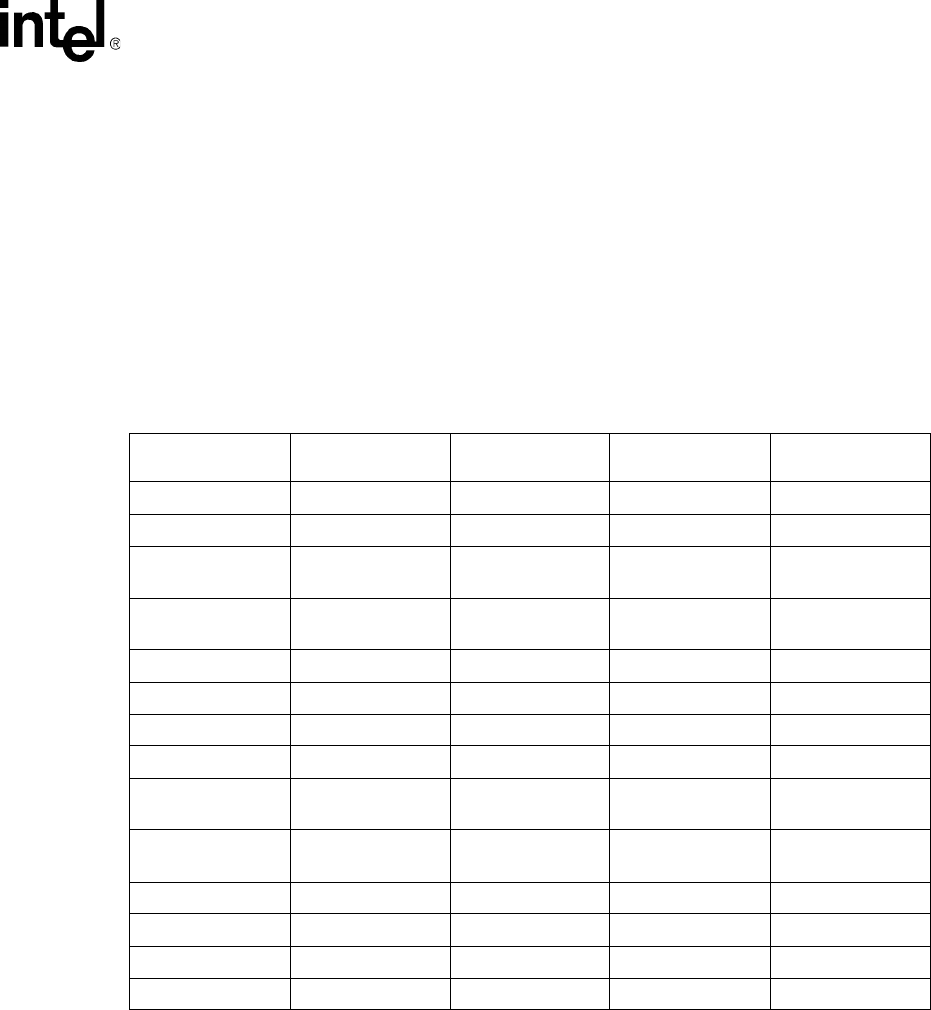

13.1.6 Setting GTD Tones as Termination Conditions . . . . . . . . . . . . . . . . . . . . . . . . . . 133

13.1.7 Maximum Amount of Memory Available for User-Defined Tone Templates . . . . 133

13.1.8 Estimating Memory . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 135

13.1.9 Guidelines for Creating User-Defined Tones. . . . . . . . . . . . . . . . . . . . . . . . . . . . 135

13.1.10 Global Tone Detection Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 138

13.2 Global Tone Generation (GTG) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 139

13.2.1 Using GTG. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 139

13.2.2 GTG Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 139

13.2.3 Building and Implementing a Tone Generation Template . . . . . . . . . . . . . . . . . . 139

13.3 Cadenced Tone Generation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 140

13.3.1 Using Cadenced Tone Generation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 141

13.3.2 How To Generate a Custom Cadenced Tone . . . . . . . . . . . . . . . . . . . . . . . . . . . 141

13.3.3 How To Generate a Non-Cadenced Tone . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 143

13.3.4 TN_GENCAD Data Structure - Cadenced Tone Generation. . . . . . . . . . . . . . . . 143

13.3.5 How To Generate a Standard PBX Call Progress Signal . . . . . . . . . . . . . . . . . . 143

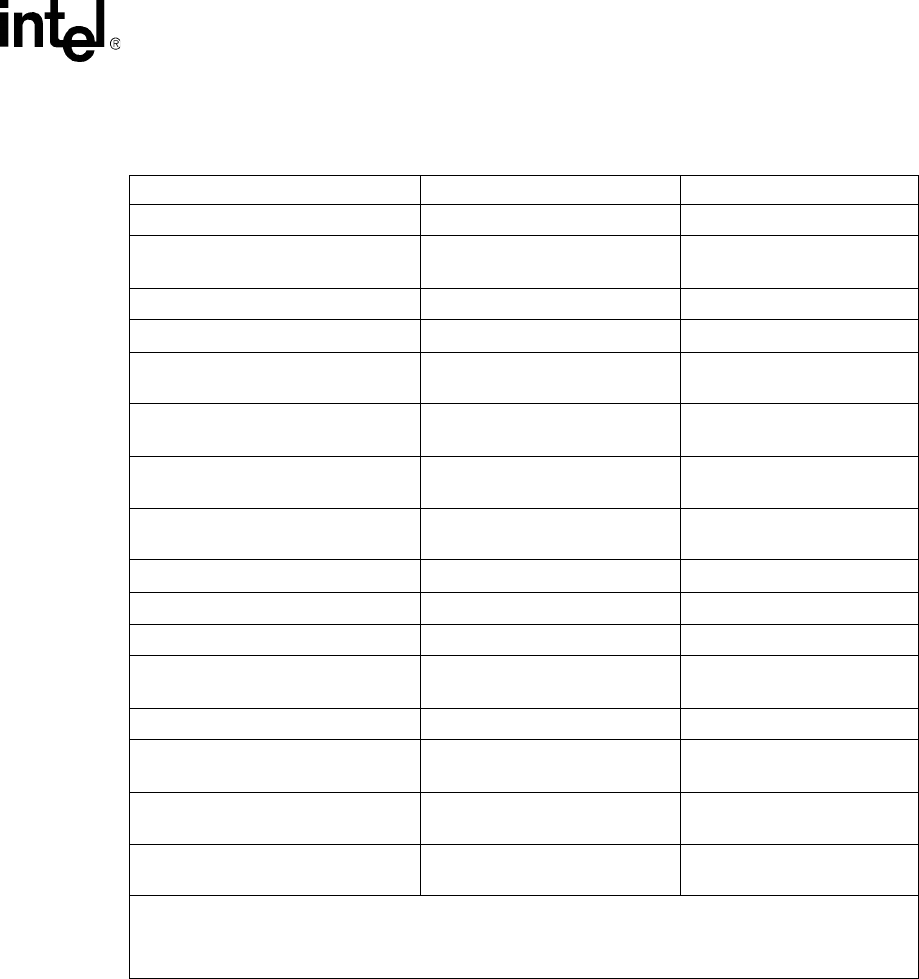

13.3.6 Predefined Set of Standard PBX Call Progress Signals . . . . . . . . . . . . . . . . . . . 144

13.3.7 Important Considerations for Using Predefined Call Progress Signals . . . . . . . . 149

14 Global Dial Pulse Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 151

14.1 Key Features . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 151

14.2 Global DPD Parameters . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 152

14.3 Enabling Global DPD . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 152

14.4 Global DPD Programming Considerations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .153

Voice API for Windows Operating Systems Programming Guide – November 2002 7

Contents

14.5 Dial Pulse Detection Digit Type Reporting. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 153

14.6 Defines for Digit Type Reporting . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 154

14.7 Global DPD Programming Procedure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 154

14.8 Global DPD Example Code (Synchronous Model) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 154

15 R2/MF Signaling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 157

15.1 R2/MF Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 157

15.2 Direct Dialing-In Service . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 158

15.3 R2/MF Multifrequency Combinations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 158

15.4 R2/MF Signal Meanings . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 159

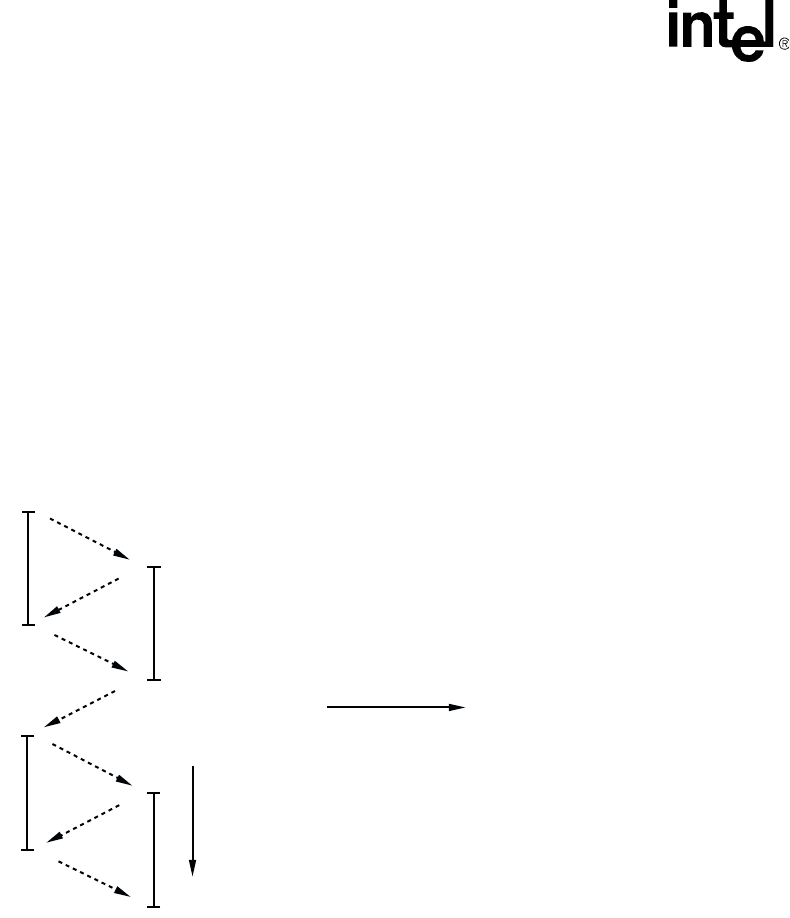

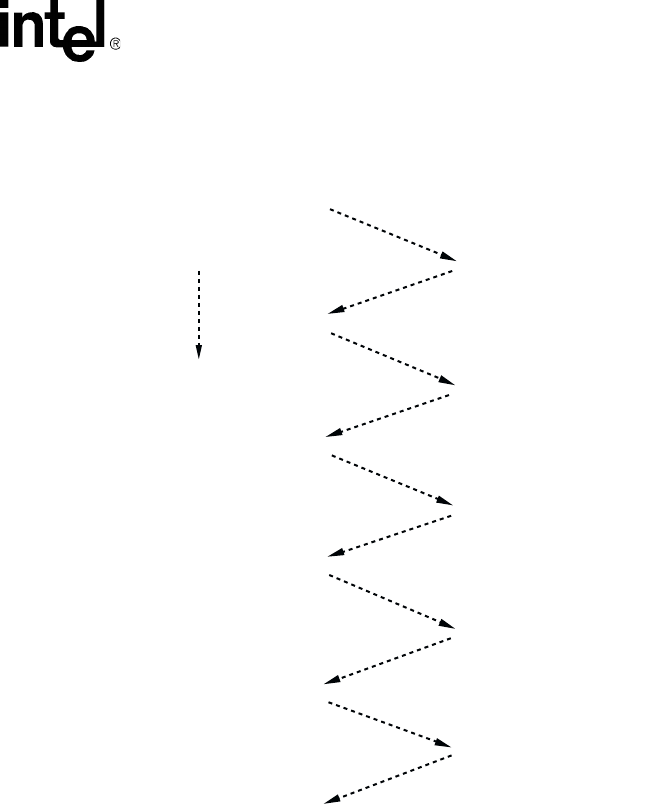

15.5 R2/MF Compelled Signaling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 165

15.6 R2/MF Voice Library Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 167

15.7 R2/MF Tone Detection Template Memory Requirements . . . . . . . . . . . . . . . . . . . . . . . . 168

16 Syntellect License Automated Attendant. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 169

16.1 Overview of Automated Attendant Function . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 169

16.2 Syntellect License Automated Attendant Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . 170

16.3 How to Use the Automated Attendant Function Call . . . . . . . . . . . . . . . . . . . . . . . . . . . . 170

17 Building Applications. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 171

17.1 Voice and SRL Libraries . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 171

17.2 Compiling and Linking . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 172

17.2.1 Include Files . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 172

17.2.2 Required Libraries . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 172

17.2.3 Run-time Linking . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 173

17.2.4 Variables for Compiling and Linking . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 173

Glossary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 175

Index . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 183

8Voice API for Windows Operating Systems Programming Guide – November 2002

Contents

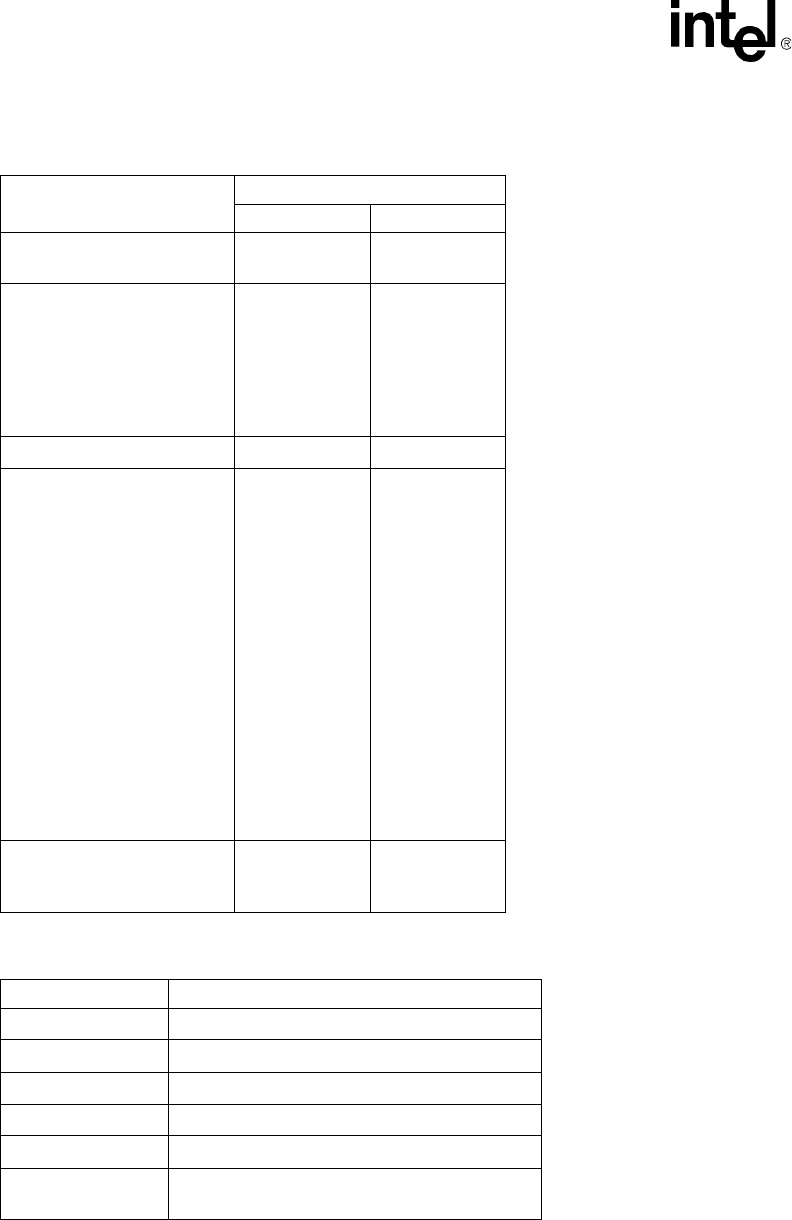

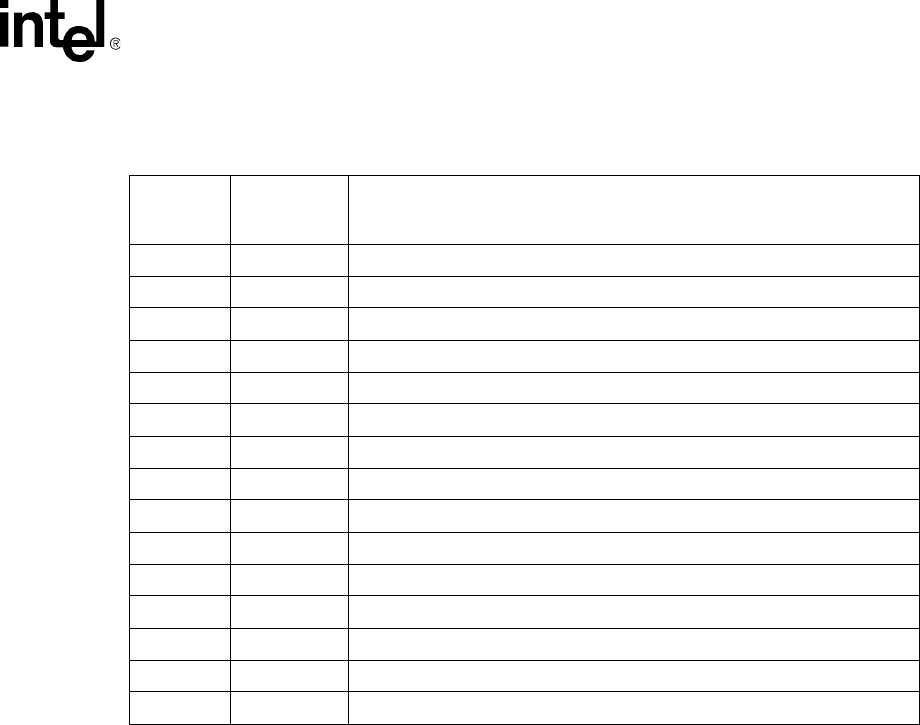

Figures

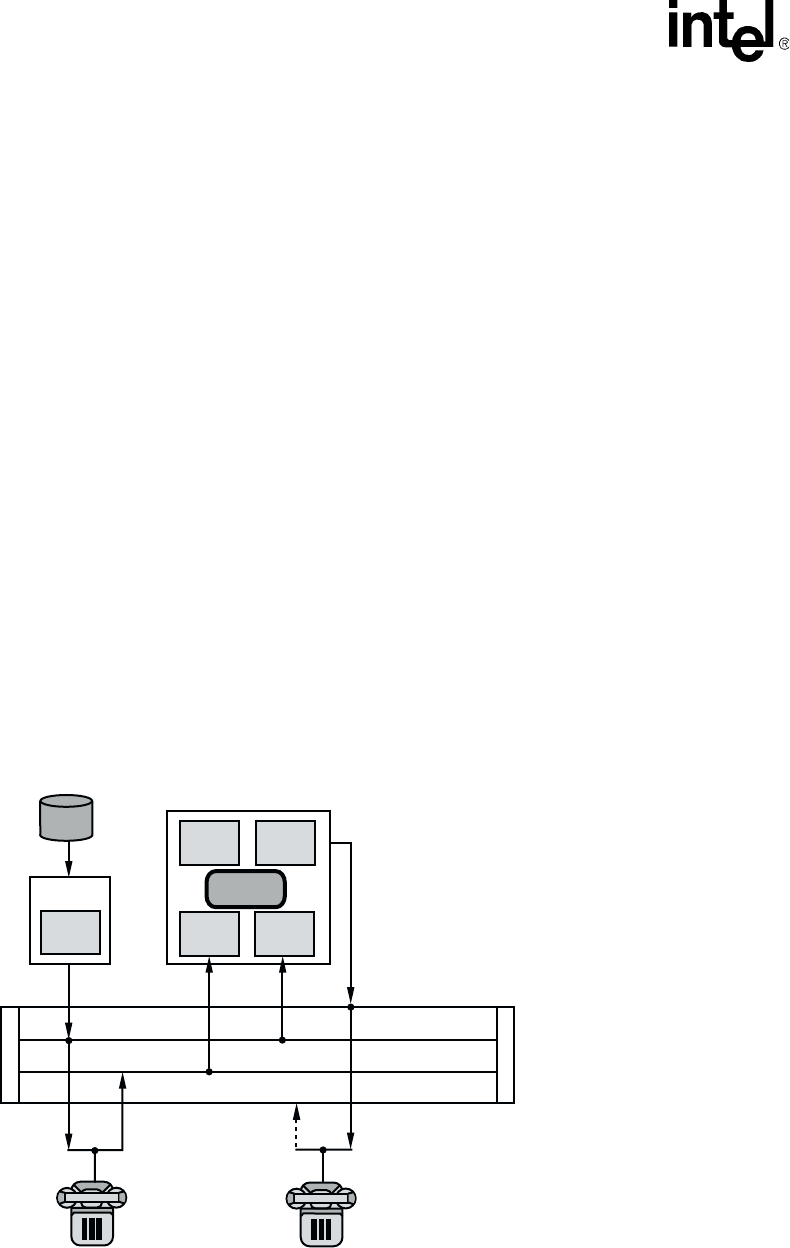

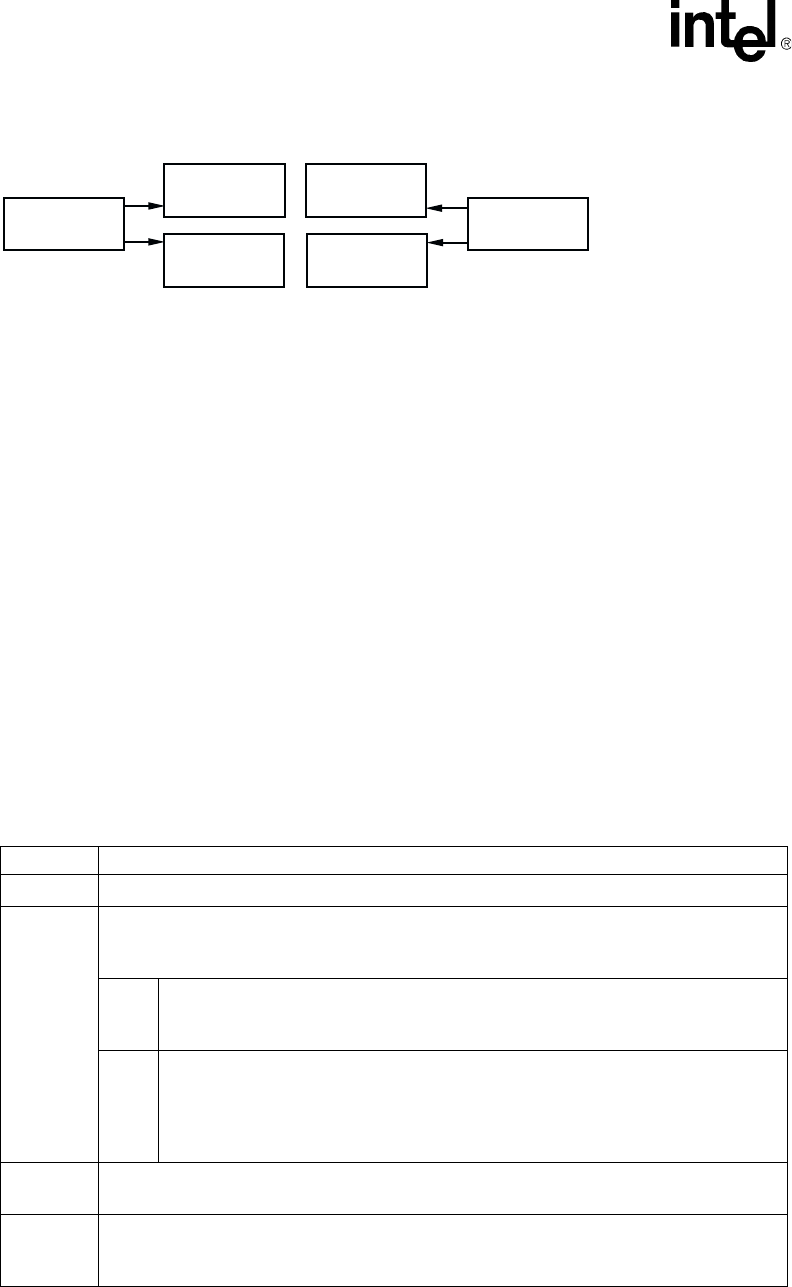

1 Cluster Configurations for Fixed and Flexible Routing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 35

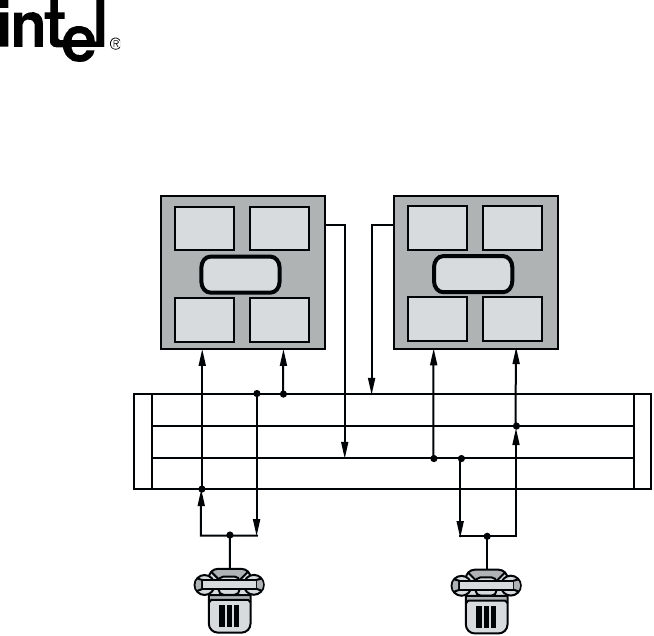

2 Multiple Devices Listening to the Same TDM Bus Time Slot . . . . . . . . . . . . . . . . . . . . . . . . . . . 39

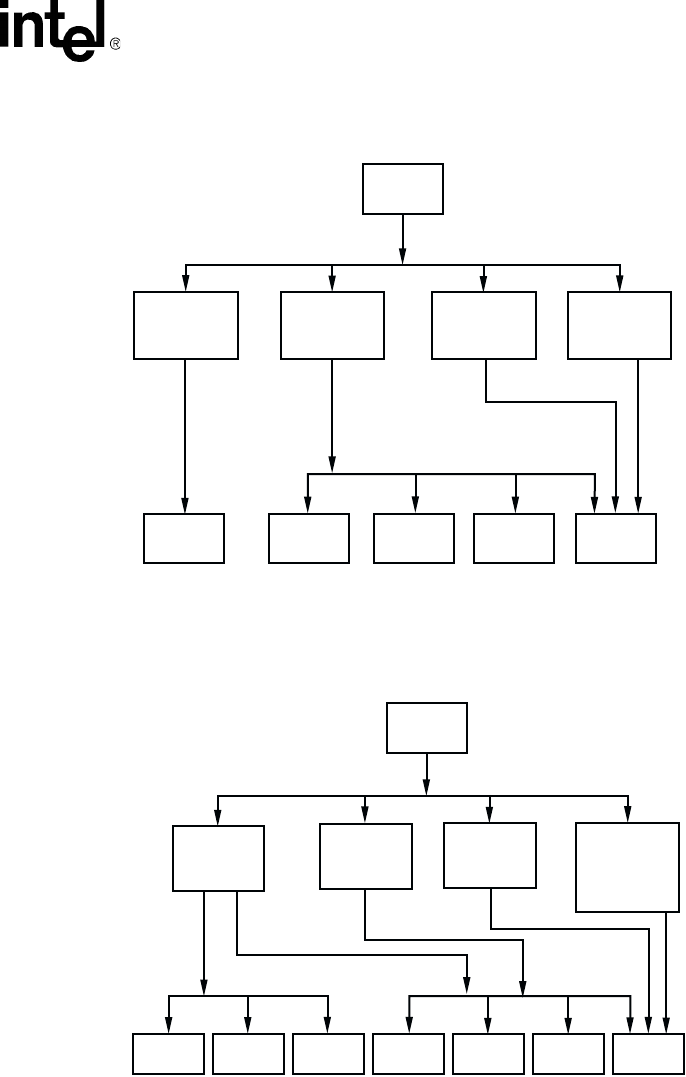

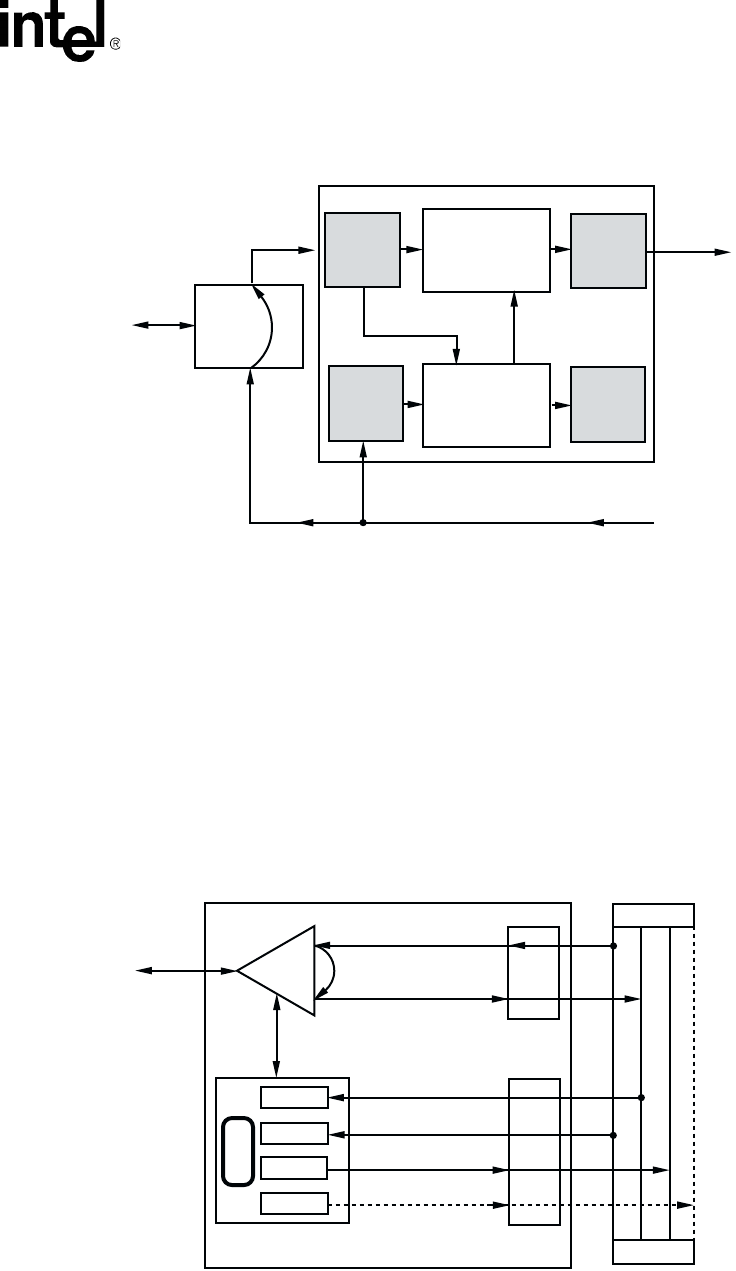

3 Basic Call Progress Analysis Components . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 45

4 PerfectCall Call Progress Analysis Components . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 45

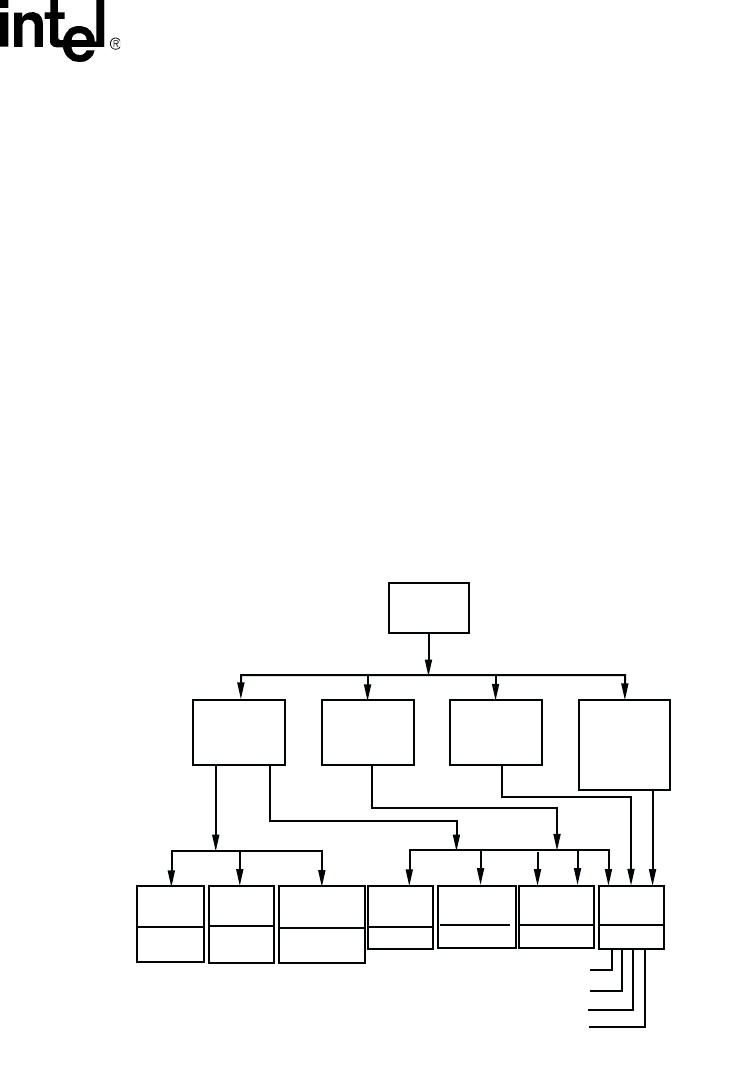

5 Call Outcomes for Call Progress Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 49

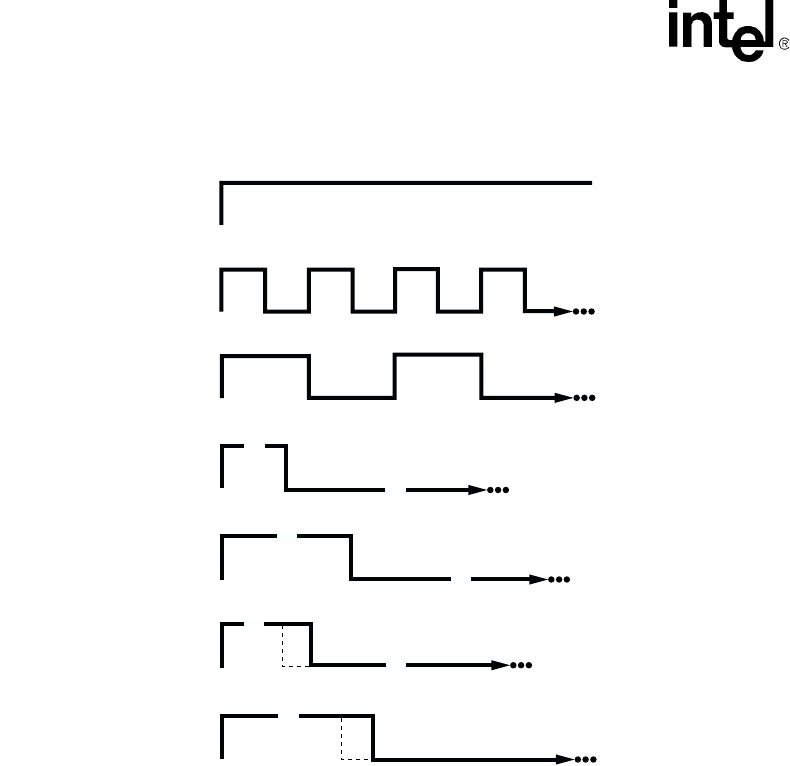

6 A Standard Busy Signal . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

7 A Standard Single Ring . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 69

8 A Type of Double Ring . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 69

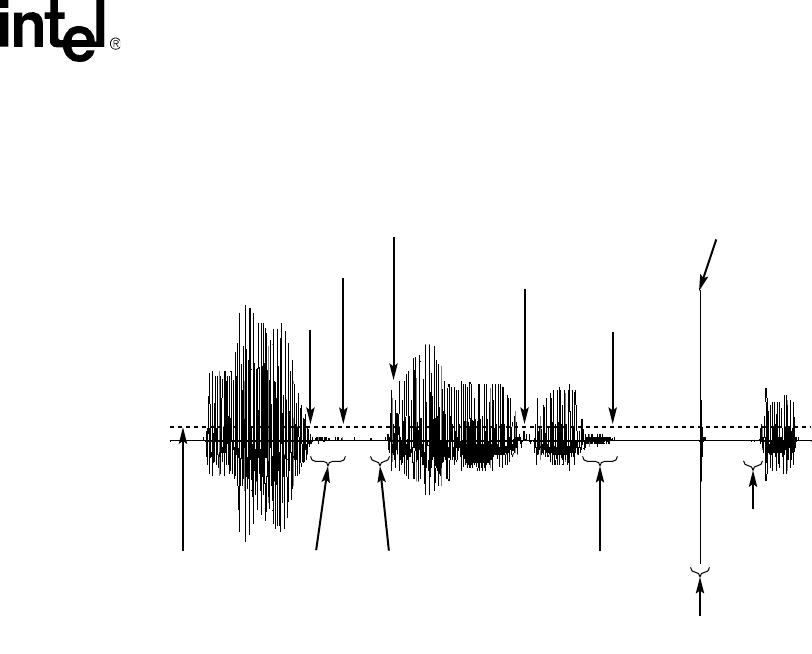

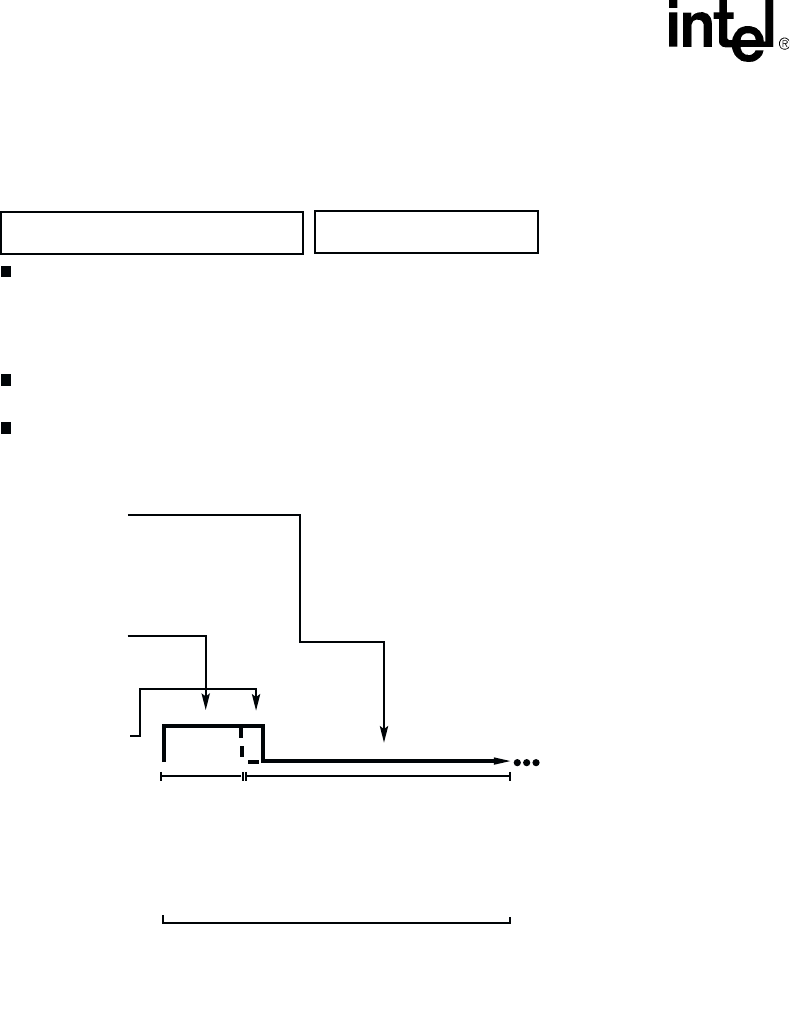

9 Cadence Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 69

10 Elements of Established Cadence . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 70

11 No Ringback Due to Continuous No Signal . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 72

12 No Ringback Due to Continuous Nonsilence . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 73

13 Cadence Detection Salutation Processing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

14 Silence Compressed Record Parameters Illustrated . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 83

15 Echo Canceller with Relevant Input and Output Signals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

16 Echo Canceller Operating over a TDM bus . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

17 ECR Bridge Example Diagram . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89

18 An ECR Play Over the TDM bus . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 92

19 Example of Custom Cadenced Tone Generation. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 142

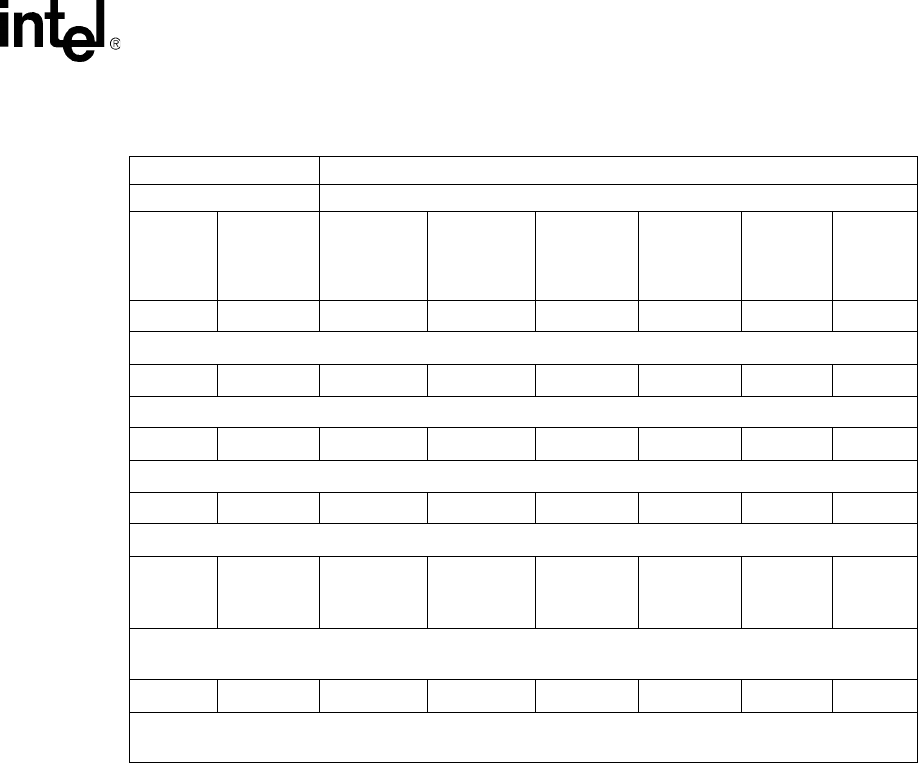

20 Standard PBX Call Progress Signals (Part 1) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 146

21 Standard PBX Call Progress Signals (Part 2) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 147

22 Forward and Backward Interregister Signals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 157

23 Multiple Meanings for R2/MF Signals. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 160

24 R2/MF Compelled Signaling Cycle. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 166

25 Example of R2/MF Signals for 4-digit DDI Application . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 167

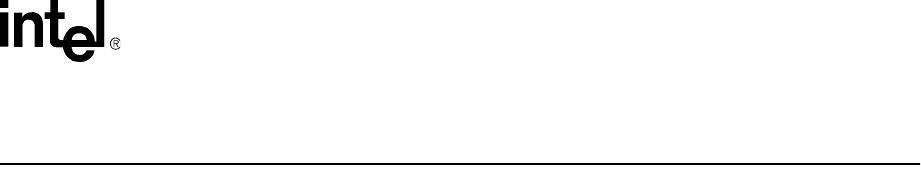

26 Voice and SRL Libraries. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 171

Voice API for Windows Operating Systems Programming Guide – November 2002 9

Contents

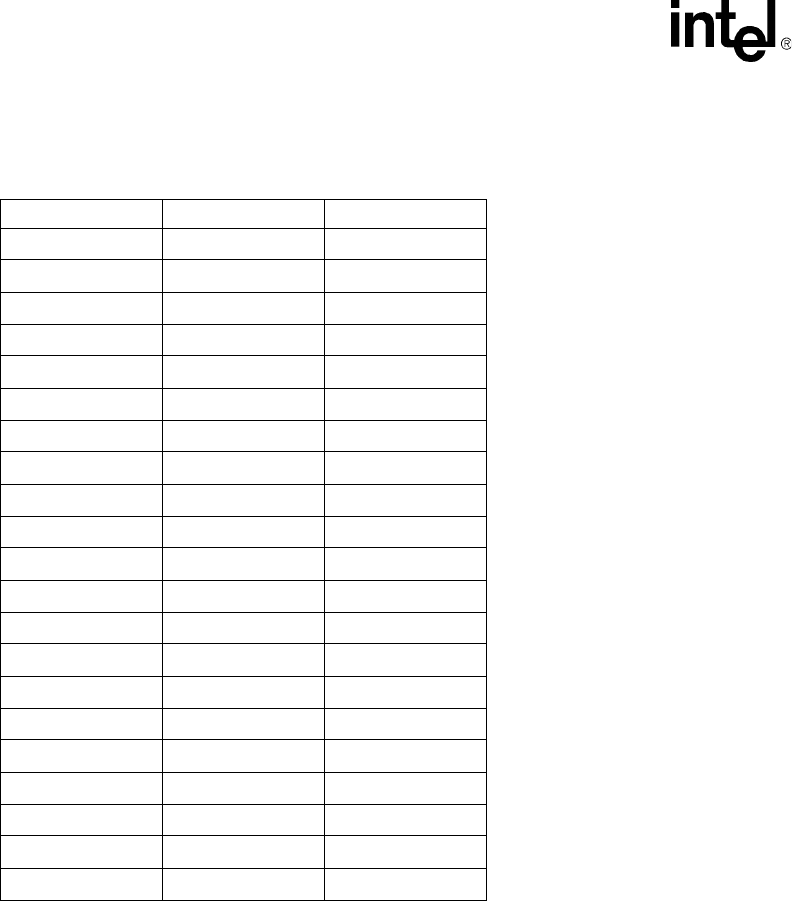

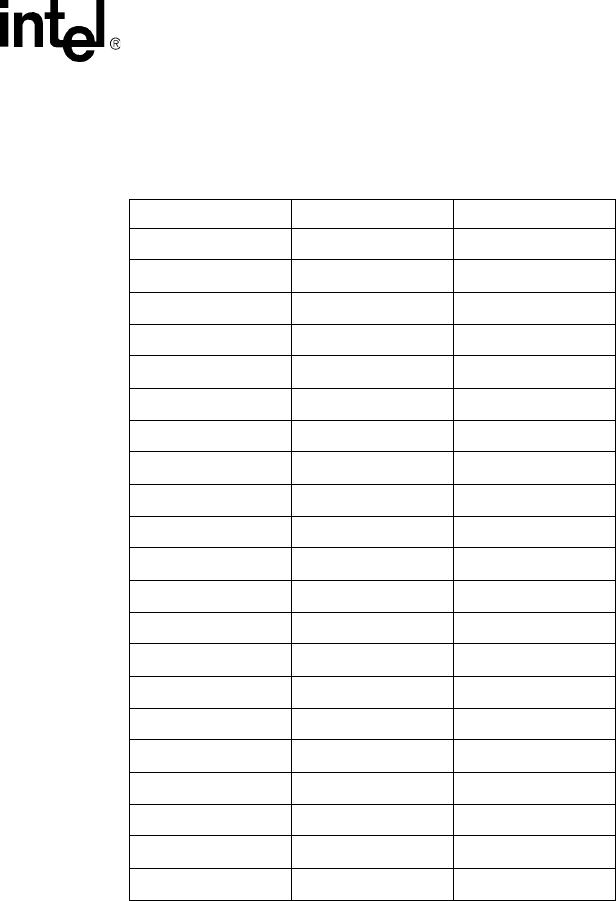

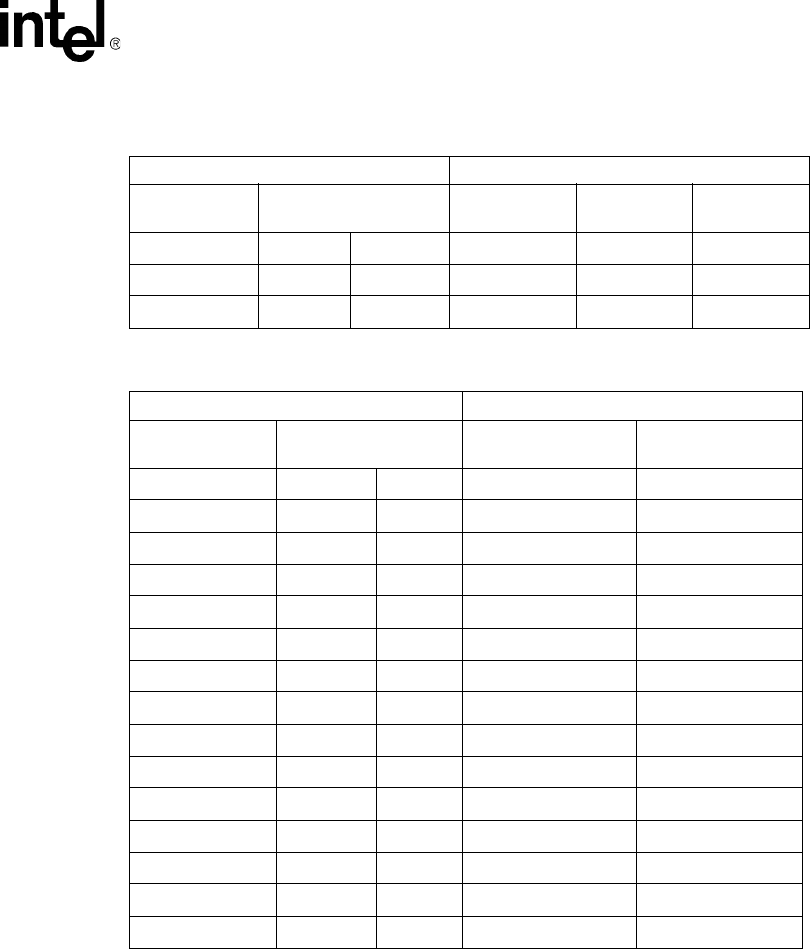

Tables

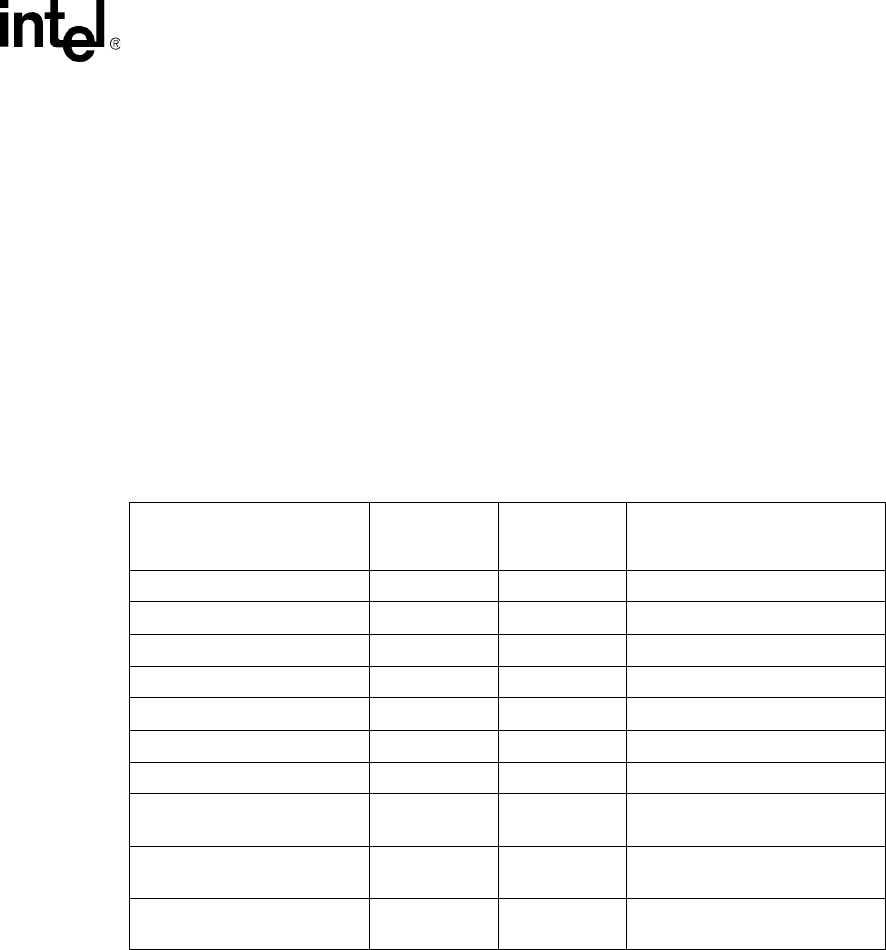

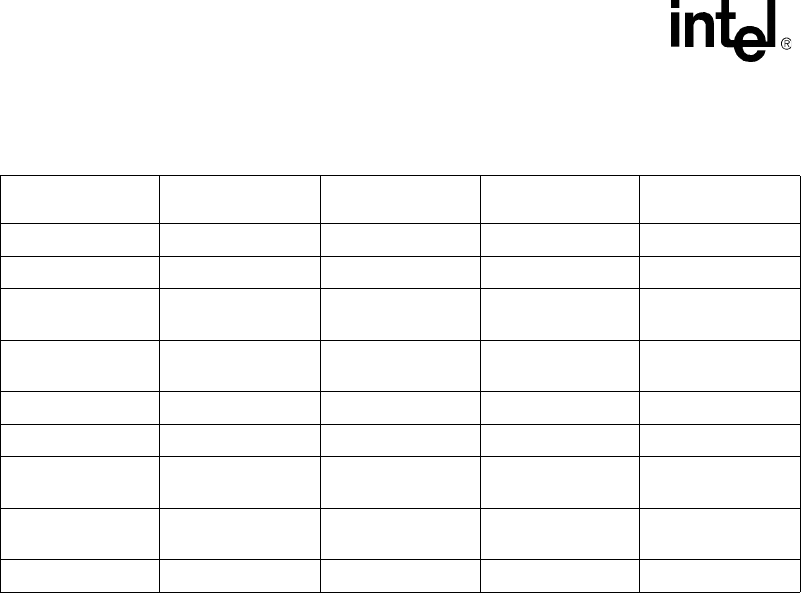

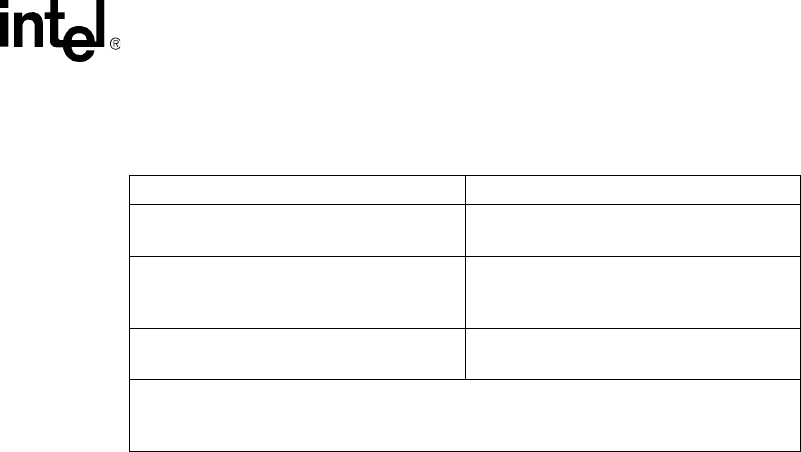

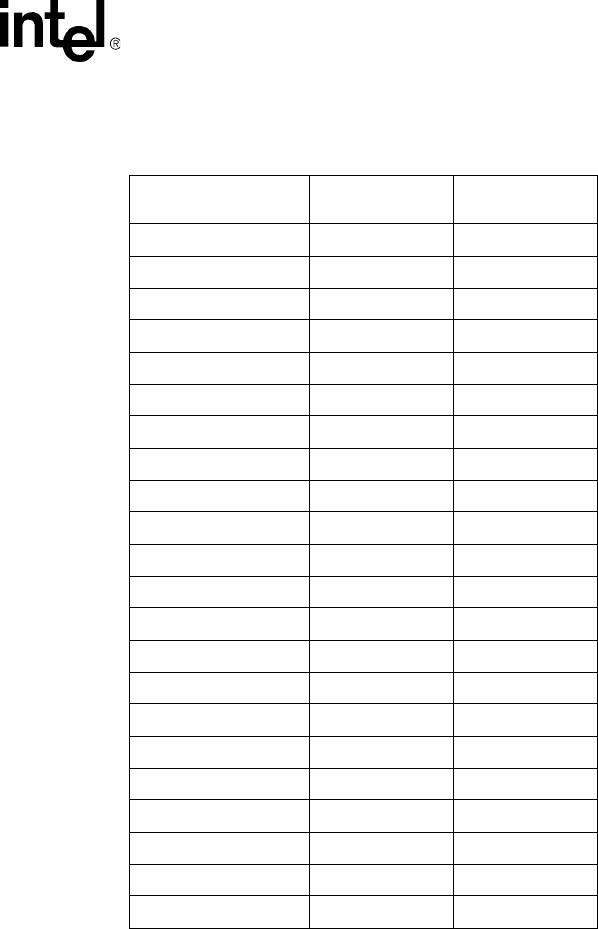

1 Voice Device Inputs for Event Management Functions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

2 Voice Device Returns from Event Management Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

3 API Function Restrictions in a Fixed Routing Configuration . . . . . . . . . . . . . . . . . . . . . . . . . . . 36

4 Call Progress Analysis Support with dx_dial( ) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51

5 Default Call Progress Analysis Tone Definitions (DM3) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 60

6 Default Call Progress Analysis Tone Definitions (Springware) . . . . . . . . . . . . . . . . . . . . . . . . . 60

7 Special Information Tone Sequences (Springware) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

8 Voice Encoding Methods (DM3 Boards) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 79

9 Voice Encoding Methods (Springware Boards) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 80

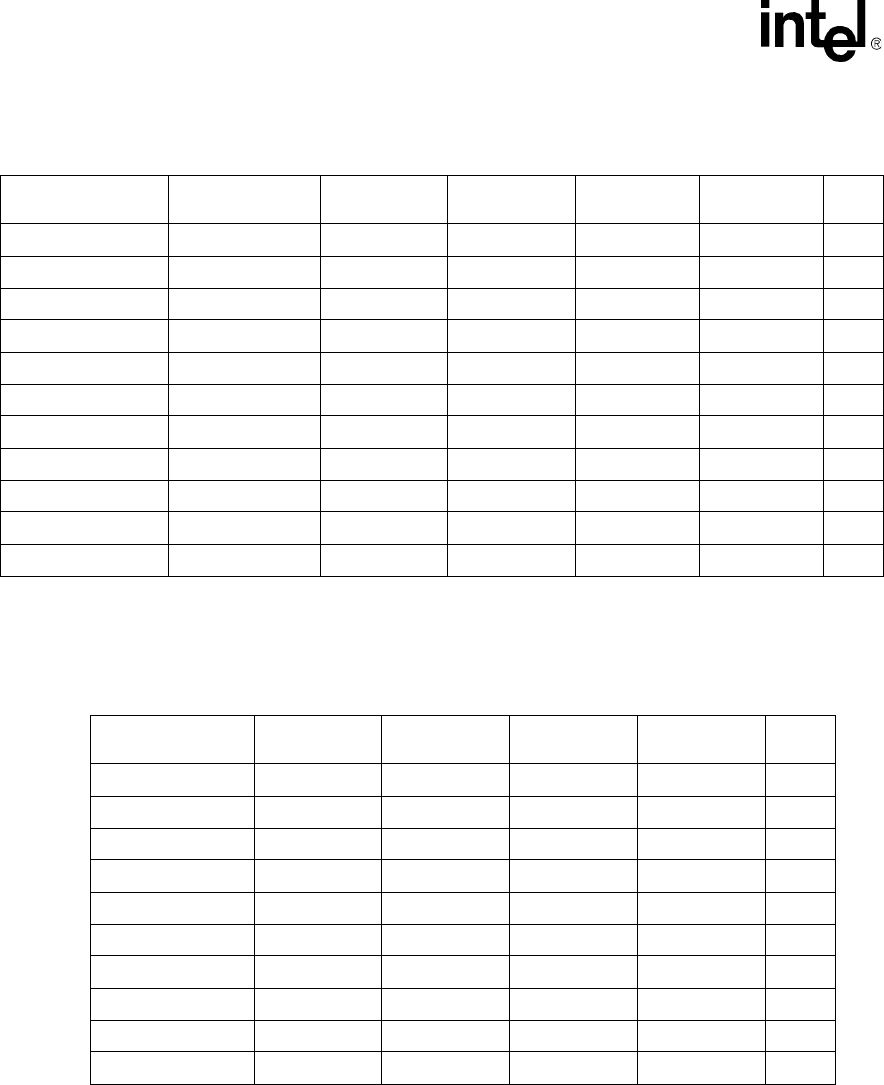

10 Default Speed Modification Table . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 100

11 Default Volume Modification Table . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 101

12 Supported CLASS Caller ID Information . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 118

13 Standard Bell System Network Call Progress Tones . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 132

14 Asynchronous/Synchronous Tone Event Handling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 133

15 Maximum Memory Available for User-Defined Tone Templates . . . . . . . . . . . . . . . . . . . . . . . 134

16 Maximum Memory Available for Tone Templates for Tone-Creating Voice Features . . . . . . . 134

17 Maximum Memory and Tone Templates (for Dual Tones) . . . . . . . . . . . . . . . . . . . . . . . . . . . 137

18 Standard PBX Call Progress Signals. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 145

19 TN_GENCAD Definitions for Standard PBX Call Progress Signals . . . . . . . . . . . . . . . . . . . . 148

20 Forward Signals, CCITT Signaling System R2/MF tones . . . . . . . . . . . . . . . . . . . . . . . . . . . . 158

21 Backward Signals, CCITT Signaling System R2/MF tones . . . . . . . . . . . . . . . . . . . . . . . . . . . 159

22 Purpose of Signal Groups and Changeover in Meaning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 160

23 Meanings for R2/MF Group I Forward Signals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 162

24 Meanings for R2/MF Group II Forward Signals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 163

25 Meanings for R2/MF Group A Backward Signals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 164

26 Meanings for R2/MF Group B Backward Signals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 165

10 Voice API for Windows Operating Systems Programming Guide – November 2002

Contents

Voice API for Windows Operating Systems Programming Guide — November 2002 11

About This Publication

The following topics provide information about this publication:

•Purpose

•Intended Audience

•How to Use This Publication

•Related Information

Purpose

This publication describes voice processing features and provides guidelines for building computer

telephony applications using the voice API on Windows* operating systems. Such applications

include, but are not limited to, call routing, voice messaging, interactive voice response, and call

center applications.

This publication is a companion guide to the Voice API Library Reference, which provides details

on the functions and parameters in the voice library.

Intended Audience

This information is intended for:

•Distributors

•System Integrators

•Toolkit Developers

•Independent Software Vendors (ISVs)

•Value Added Resellers (VARs)

•Original Equipment Manufacturers (OEMs)

•End Users

How to Use This Publication

This document assumes that you are familiar with and have prior experience with Windows*

operating systems and the C programming language. Use this document together with the

following: the Voice API Programming Guide, the Standard Runtime Library API Programming

Guide, and the Standard Runtime Library API Library Reference.

12 Voice API for Windows Operating Systems Programming Guide — November 2002

About This Publication

The information in this guide is organized as follows:

•Chapter 1, “Product Description” introduces the key features of the voice library and provides

a brief description of each feature.

•Chapter 2, “Programming Models” provides a brief overview of supported programming

models.

•Chapter 3, “Device Handling” discusses topics related to devices such as device naming

concepts, how to open and close devices, and how to discover whether a device is Springware

or DM3.

•Chapter 4, “Event Handling” provides information on functions used to handle events.

•Chapter 5, “Error Handling” provides information on handling errors in your application.

•Chapter 6, “Application Development Guidelines” provides programming guidelines and

techniques for developing an application using the voice library. This chapter also discusses

fixed and flexible routing configurations.

•Chapter 7, “Call Progress Analysis” describes the components of call progress analysis in

detail. This chapter also covers differences between Basic Call Progress Analysis and

PerfectCall Call Progress Analysis.

•Chapter 8, “Recording and Playback” discusses playback and recording features, such as

encoding algorithms, play and record API functions, transaction record, and silence

compressed record.

•Chapter 9, “Speed and Volume Control” explains how to control speed and volume of

playback recordings through API functions and data structures.

•Chapter 10, “Send and Receive FSK Data” describes the two-way frequency shift keying

(FSK) feature, the Analog Display Services Interface (ADSI), and API functions for use with

this feature.

•Chapter 11, “Caller ID” describes the caller ID feature, supported formats, and how to enable

it.

•Chapter 12, “Cached Prompt Management” provides information on cached prompts and how

to use cached prompt management in your application.

•Chapter 13, “Global Tone Detection and Generation, and Cadenced Tone Generation”

describes these tone detection and generation features in detail.

•Chapter 14, “Global Dial Pulse Detection” discusses the Global DPD feature, the API

functions for use with this feature, programming guidelines, and example code.

•Chapter 15, “R2/MF Signaling” describes the R2/MF signaling protocol, the API functions for

use with this feature, and programming guidelines.

•Chapter 16, “Syntellect License Automated Attendant” describes Intel® Dialogic® hardware

and software that include a license for the Syntellect Technology Corporation (STC) patent

portfolio.

•Chapter 17, “Building Applications” discusses compiling and linking requirements such as

include files and library files.

Voice API for Windows Operating Systems Programming Guide — November 2002 13

About This Publication

Related Information

You can download Intel® Dialogic® documentation (known as the online bookshelf) as part of the

system release software installation or from the Telecom Support Resources website at

http://resource.intel.com/telecom/support/documentation/releases/index.htm.

See the following for more information:

•For details on all voice functions, parameters and data structures in the voice library, see the

Voice API Library Reference.

•For details on the Standard Runtime Library (SRL), supported programming models, and

programming guidelines for building all applications, see the Standard Runtime Library API

Programming Guide. The SRL is a device-independent library that consists of event

management functions and standard attribute functions.

•For details on all functions and data structures in the Standard Runtime Library (SRL) library,

see the Standard Runtime Library API Library Reference.

•For information on the system release, system requirements, software and hardware features,

supported hardware, and release documentation, see the Release Guide for the system release

you are using.

•For details on compatibility issues, restrictions and limitations, known problems, and late-

breaking updates or corrections to the release documentation, see the Release Update.

Be sure to check the Release Update for the system release you are using for any updates or

corrections to this publication. Release Updates are available on the Telecom Support

Resources website at http://resource.intel.com/telecom/support/releases/index.html.

•For details on installing the system software, see the System Release Installation Guide.

•For guidelines on building applications using Global Call software (a common signaling

interface for network-enabled applications, regardless of the signaling protocol needed to

connect to the local telephone network), see the Global Call API Programming Guide.

•For details on all functions and data structures in the Global Call library, see the Global Call

API Library Reference.

•For details on configuration files (including FCD/PCD files) and instructions for configuring

products, see the Configuration Guide for your product or product family.

•For technical support, see http://developer.intel.com/design/telecom/support/. This Technical

Support Web site contains developer support information, downloads, release documentation,

technical notes, application notes, a user discussion forum, and more.

14 Voice API for Windows Operating Systems Programming Guide — November 2002

About This Publication

Voice API for Windows Operating Systems Programming Guide — November 2002 15

1

1.Product Description

This chapter provides information on key voice library features and capability. The following

topics are covered:

•Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

•R4 API . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

•Call Progress Analysis. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

•Tone Generation and Detection Features. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

•Dial Pulse Detection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

•Play and Record Features . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

•Send and Receive FSK Data . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

•Caller ID . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

•R2/MF Signaling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

1.1 Overview

The voice software provides a high-level interface to telecom media processing boards from Intel

and is a building block for creating computer telephony applications. It offers a comprehensive set

of features such as dual-tone multifrequency (DTMF) detection, tone signaling, call progress

analysis, caller ID detection, playing and recording that supports a number of encoding methods,

and much more.

The voice software consists of a C language library of functions, device drivers, and firmware.

The voice library is well integrated with other technology libraries provided by Intel such as fax,

conferencing, and continuous speech processing. This architecture enables you to add new

capability to your voice application over time.

For an up-to-date list of products and features supported, see the latest system release guide. For a

list of voice features by product, see

http://www.intel.com/design/network/products/telecom/boards/index.htm.

1.2 R4 API

The term R4 API (“System Software Release 4 Application Programming Interface”) describes the

direct interface used for creating computer telephony application programs. The R4 API is a rich

set of proprietary APIs for building computer telephony applications tailored to hardware products

from Intel. These APIs encompass technologies that include voice, network interface, fax, and

speech. This document describes the voice API.

16 Voice API for Windows Operating Systems Programming Guide — November 2002

Product Description

In addition to original Springware products (also known as earlier-generation products), the R4

API supports a new generation of hardware products that are based on the DM3 mediastream

architecture. Feature differences between these two categories of products are noted.

DM3 boards is a collective name used in this document to refer to products that are based on the

Intel® Dialogic® DM3 mediastream architecture. DM3 board names typically are prefaced with

“DM,” such as the Intel® NetStructure™ DM/V2400A-PCI. Springware boards refer to boards

based on earlier-generation architecture. Springware boards typically are prefaced with “D,” such

as the Intel® Dialogic® D/240JCT-T1.

In this document, the term voice API is used to refer to the R4 voice API. The term “R4 for DM3”

or “R4 on DM3” is used to refer to specific aspects of the R4 API interface that relate to support for

DM3 boards.

1.3 Call Progress Analysis

Call progress analysis monitors the progress of an outbound call after it is dialed into the Public

Switched Telephone Network (PSTN).

There are two forms of call progress analysis: basic and PerfectCall. PerfectCall call progress

analysis uses an improved method of signal identification and can detect fax machines and

answering machines. Basic call progress analysis provides backward compatibility for older

applications written before PerfectCall call progress analysis became available.

Note: PerfectCall call progress analysis was formerly called enhanced call analysis.

See Chapter 7, “Call Progress Analysis” for detailed information about this feature.

1.4 Tone Generation and Detection Features

In addition to DTMF and MF tone detection and generation, the following signaling features are

provided by the voice library:

•Global Tone Detection (GTD)

•Global Tone Generation (GTG)

•Cadenced Tone Generation

1.4.1 Global Tone Detection (GTD)

Global tone detection allows you to define single- or dual-frequency tones for detection on a

channel-by-channel basis. Global tone detection and GTD tones are also known as user-defined

tone detection and user-defined tones.

Use global tone detection to detect single- or dual-frequency tones outside the standard DTMF

range of 0-9, a-d, *, and #. The characteristics of a tone can be defined and tone detection can be

enabled using GTD functions and data structures provided in the voice library.

Voice API for Windows Operating Systems Programming Guide — November 2002 17

Product Description

See Chapter 13, “Global Tone Detection and Generation, and Cadenced Tone Generation” for

detailed information about global tone detection.

1.4.2 Global Tone Generation (GTG)

Global tone generation allows you to define a single- or dual-frequency tone in a tone generation

template and to play the tone on a specified channel.

See Chapter 13, “Global Tone Detection and Generation, and Cadenced Tone Generation” for

detailed information about global tone generation.

1.4.3 Cadenced Tone Generation

Cadenced tone generation is an enhancement to global tone generation. It allows you to generate a

tone with up to 4 single- or dual-tone elements, each with its own on/off duration, which creates the

signal pattern or cadence. You can define your own custom cadenced tone or take advantage of the

built-in set of standard PBX call progress signals, such as dial tone, ringback, and busy.

See Chapter 13, “Global Tone Detection and Generation, and Cadenced Tone Generation” for

detailed information about cadenced tone generation.

1.5 Dial Pulse Detection

Dial pulse detection (DPD) allows applications to detect dial pulses from rotary or pulse phones by

detecting the audible clicks produced when a number is dialed, and to use these clicks as if they

were DTMF digits. Global dial pulse detection, called global DPD, is a software-based dial pulse

detection method that can use country-customized parameters for extremely accurate performance.

See Chapter 14, “Global Dial Pulse Detection” for more information about this feature.

1.6 Play and Record Features

The following play and record features are provided by the voice library:

•Play and Record Functions

•Speed and Volume Control

•Transaction Record

•Silence Compressed Record

•Echo Cancellation Resource

18 Voice API for Windows Operating Systems Programming Guide — November 2002

Product Description

1.6.1 Play and Record Functions

The voice library includes several functions and data structures used for recording and playing

audio data. These allow you to digitize and store human voice, then retrieve, convert and play this

digital information.

For more information about the play and record feature, see Chapter 8, “Recording and Playback”.

For detailed information about play and record functions, see the Voice API Library Reference.

Telecom media processing boards from Intel support many well-known voice encoding methods or

algorithms. For more information about these voice encoding methods or algorithms, see

Section 8.5, “Voice Encoding Methods”, on page 79.

1.6.2 Speed and Volume Control

The speed and volume control feature allows you to control the speed and volume of a message

being played on a channel, for example, by entering a DTMF tone.

Several functions and data structures can be used to control speed and volume of play on a channel.

For more information, see Chapter 9, “Speed and Volume Control”.

1.6.3 Transaction Record

The transaction record feature allows voice activity on two channels to be summed and stored in a

single file, or in a combination of files, devices, and memory. This feature is useful in call center

applications where it is necessary to archive a verbal transaction or record a live conversation.

See Chapter 8, “Recording and Playback” for more information on the transaction record feature.

1.6.4 Silence Compressed Record

The silence compressed record (SCR) feature enables recording with silent pauses eliminated. This

results in smaller recorded files with no loss of intelligibility.

When the audio level is at or falls below the silence threshold for a minimum duration of time,

silence compressed record begins. If a short burst of noise (glitch) is detected, the compression

does not end unless the glitch is longer than a specified period of time.

See Chapter 8, “Recording and Playback” for more information.

Voice API for Windows Operating Systems Programming Guide — November 2002 19

Product Description

1.6.5 Echo Cancellation Resource

The echo cancellation resource (ECR) feature enables a voice channel to dynamically perform echo

cancellation on any external TDM bus time slot signal.

Note: The ECR feature has been replaced with continuous speech processing (CSP). Although the CSP

API is related to the voice API, it is provided as a separate product. The continuous speech

processing software is a significant enhancement to ECR. The continuous speech processing

library provides many features such as high-performance echo cancellation, voice energy detection,

barge-in, voice event signaling, pre-speech buffering, full-duplex operation and more. For more

information on this API, see the Continuous Speech Processing documentation.

See Chapter 8, “Recording and Playback” for a detailed description of the ECR feature.

1.7 Send and Receive FSK Data

The send and receive frequency shift keying (FSK) data interface is used for Analog Display

Services Interface (ADSI). Frequency shift keying is a frequency modulation technique to send

digital data over voiced band telephone lines. ADSI allows information to be transmitted for

display on a display-based telephone connected to an analog loop-start line, and to store and

forward SMS messages in the Public Switched Telephone Network (PSTN). The telephone must be

a true ADSI-compliant device.

See Chapter 10, “Send and Receive FSK Data” for a summary of the ADSI protocol and directions

for implementing ADSI support using voice library functions.

1.8 Caller ID

An application can enable the caller ID feature on specific channels to process caller ID

information as it is received with an incoming call. Caller ID information can include the calling

party’s directory number (DN), the date and time of the call, and the calling party’s subscriber

name.

See Chapter 11, “Caller ID” for more information about this feature.

1.9 R2/MF Signaling

R2/MF signaling is an international signaling system that is used in Europe and Asia to permit the

transmission of numerical and other information relating to the called and calling subscribers’

lines.

R2/MF signaling is typically accomplished through the Global Call API. For more information, see

the Global Call documentation set. Chapter 15, “R2/MF Signaling” is provided for reference only.

for more information about R2/MF signaling and how to use it with Springware boards.

20 Voice API for Windows Operating Systems Programming Guide — November 2002

Product Description

1.10 TDM Bus Routing

A time division multiplexing (TDM) bus is a technique for transmitting a number of separate

digitized signals simultaneously over a communication medium. TDM bus includes the CT Bus

and SCbus.

The CT Bus is an implementation of the computer telephony bus standard developed by the

Enterprise Computer Telephony Forum (ECTF) and accepted industry-wide. The H100 hardware

specification covers CT Bus implementation using the PCI form factor. The H110 hardware

specification covers CT Bus implementation using the CompactPCI (cPCI) form factor. The CT

Bus has 4096 bi-directional time slots.

The SCbus or signal computing bus connects Signal Computing System Architecture (SCSA)

resources. The SCbus has 1024 bi-directional time slots.

A TDM bus connects voice, telephone network interface, fax, and other technology resource

boards together. TDM bus boards are treated as board devices with on-board voice and/or

telephone network interface devices that are identified by a board and channel (time slot for digital

network channels) designation, such as a voice channel, analog channel, or digital channel.

For information on TDM routing functions, see the Voice API Library Reference.

Note: When you see a reference to the SCbus or SCbus routing, the information also applies to the CT

Bus on DM3 products. That is, the physical interboard connection can be either SCbus or CT Bus.

The SCbus protocol is used and the TDM routing API (previously called the SCbus routing API)

applies to all the boards regardless of whether they use an SCbus or CT Bus physical interboard

connection.

Voice API for Windows Operating Systems Programming Guide — November 2002 21

2

2.Programming Models

This chapter briefly discusses the Standard Runtime Library and supported programming models:

•Standard Runtime Library . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

•Asynchronous Programming Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

•Synchronous Programming Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.1 Standard Runtime Library

The Standard Runtime Library (SRL) provides a set of common system functions that are device

independent and are applicable to all Intel® Dialogic® devices. The SRL consists of a data

structure, event management functions, device management functions (called standard attribute

functions), and device mapper functions. You can use the SRL to simplify application

development, such as by writing common event handlers to be used by all devices.

When developing voice processing applications, refer to the Standard Runtime Library

documentation in tandem with the voice library documentation. For more information on the

Standard Runtime Library, see the Standard Runtime Library API Library Reference and

Programming Guide.

2.2 Asynchronous Programming Models

Asynchronous programming enables a single program to control multiple voice channels within a

single process. This allows the development of complex applications where multiple tasks must be

coordinated simultaneously.

The asynchronous programming model uses functions that do not block thread execution; that is,

the function continues processing under the hood. A Standard Runtime Library (SRL) event later

indicates function completion.

Generally, if you are building applications that use any significant density, you should use the

asynchronous programming model to develop field solutions.

For complete information on asynchronous programming models, see the Standard Runtime

Library API Programming Guide.

2.3 Synchronous Programming Model

The synchronous programming model uses functions that block application execution until the

function completes. This model requires that each channel be controlled from a separate process.

This allows you to assign distinct applications to different channels dynamically in real time.

22 Voice API for Windows Operating Systems Programming Guide — November 2002

Programming Models

Synchronous programming models allow you to scale an application by simply instantiating more

threads or processes (one per channel). This programming model may be easy to encode and

manage but it relies on the system to manage scalability. Applying the synchronous programming

model can consume large amounts of system overhead, which reduces the achievable densities and

negatively impacts timely servicing of both hardware and software interrupts. Using this model, a

developer can only solve system performance issues by adding memory or increasing CPU speed

or both. The synchronous programming models may be useful for testing or very low-density

solutions.

For complete information on synchronous programming models, see the Standard Runtime Library

API Programming Guide.

Voice API for Windows Operating Systems Programming Guide — November 2002 23

3

3.Device Handling

This chapter describes the concept of a voice device and how voice devices are named and used.

•Device Concepts . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

•Voice Device Names . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

3.1 Device Concepts

The following concepts are key to understanding devices and device handling:

device

A device is a computer component controlled through a software device driver. A resource

board, such as a voice resource, fax resource, and conferencing resource, and network

interface board contain one or more logical board devices. Each channel or time slot on the

board is also considered a device.

device channel

A device channel refers to a data path that processes one incoming or outgoing call at a time

(equivalent to the terminal equipment terminating a phone line). The first two numbers in the

product naming scheme identify the number of device channels for a given product. For

example, there are 24 voice device channels on a D/240JCT-T1 board, 30 on a D/300JCT-E1.

device name

A device name is a literal reference to a device, used to gain access to the device via an

xx_open( ) function, where “xx” is the prefix defining the device to be opened. For example,

“dx” is the prefix for voice device, “fx” for fax device, “ms” for modular station interface

(MSI) device, and so on.

device handle

A device handle is a numerical reference to a device, obtained when a device is opened using

xx_open( ), where “xx” is the prefix defining the device to be opened. The device handle is

used for all operations on that device.

physical and virtual boards

The APIs functions distinguish between physical boards and virtual boards. The device driver

views a single physical voice board with more than four channels as multiple emulated D/4x

boards. These emulated boards are called virtual boards. For example, a D/120JCT-LS with 12

channels of voice processing contains 3 virtual boards. A DM/V480A-2T1 board with 48

channels of voice processing and 2 T-1 trunk lines contains 12 virtual voice boards and 2

virtual network interface boards.

3.2 Voice Device Names

The Intel® Dialogic® system software assigns a device name to each device or each component on

a board. A voice device is named dxxxBn, where n is the device number assigned in sequential

24 Voice API for Windows Operating Systems Programming Guide — November 2002

Device Handling

order down the list of sorted voice boards. A device corresponds to a grouping of two or four voice

channels.

For example, a D/240JCT-T1 board employs 24 voice channels; the Intel® Dialogic® system

software therefore divides the D/240JCT into 6 voice board devices, each device consisting of 4

channels. Examples of board device names for voice boards are dxxxB1 and dxxxB2.

A device name can be appended with a channel or component identifier. A voice channel device is

named dxxxBnCy, where y corresponds to one of the voice channels. Examples of channel device

names for voice boards are dxxxB1C1 and dxxxB1C2.

For complete information on device handling, see the Standard Runtime Library API Programming

Guide.

Voice API for Windows Operating Systems Programming Guide — November 2002 25

4

4.Event Handling

This chapter provides information on functions used to retrieve and handle events. Topics include:

•Overview of Event Handling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

•Event Management Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

4.1 Overview of Event Handling

An event indicates that a specific activity has occurred on a channel. The voice driver reports

channel activity to the application program in the form of events, which allows the program to

identify and respond to a specific occurrence on a channel. Events provide feedback on the

progress and completion of functions and indicate the occurrence of other channel activities. Voice

library events are defined in the dxxxlib.h header file.

For a list of events that may be returned by the voice software, see the Voice API Library

Reference.

4.2 Event Management Functions

Event management functions are used to retrieve and handle events being sent to the application

from the firmware. These functions are contained in the Standard Runtime Library (SRL) and

defined in srllib.h. The SRL provides a set of common system functions that are device

independent and are applicable to all Intel® Dialogic® devices. For more information on event

management and event handling, see the Standard Runtime Library API Programming Guide.

Event management functions include:

•sr_enbhdlr( )

•sr_dishdlr( )

•sr_getevtdev( )

•sr_getevttype( )

•sr_getevtlen( )

•sr_getevtdatap( )

For details on SRL functions, see the Standard Runtime Library API Library Reference.

The event management functions retrieve and handle voice device termination events for functions

that run in asynchronous mode, such as dx_dial( ) and dx_play( ). For complete function reference

information, see the Voice API Library Reference.

26 Voice API for Windows Operating Systems Programming Guide — November 2002

Event Handling

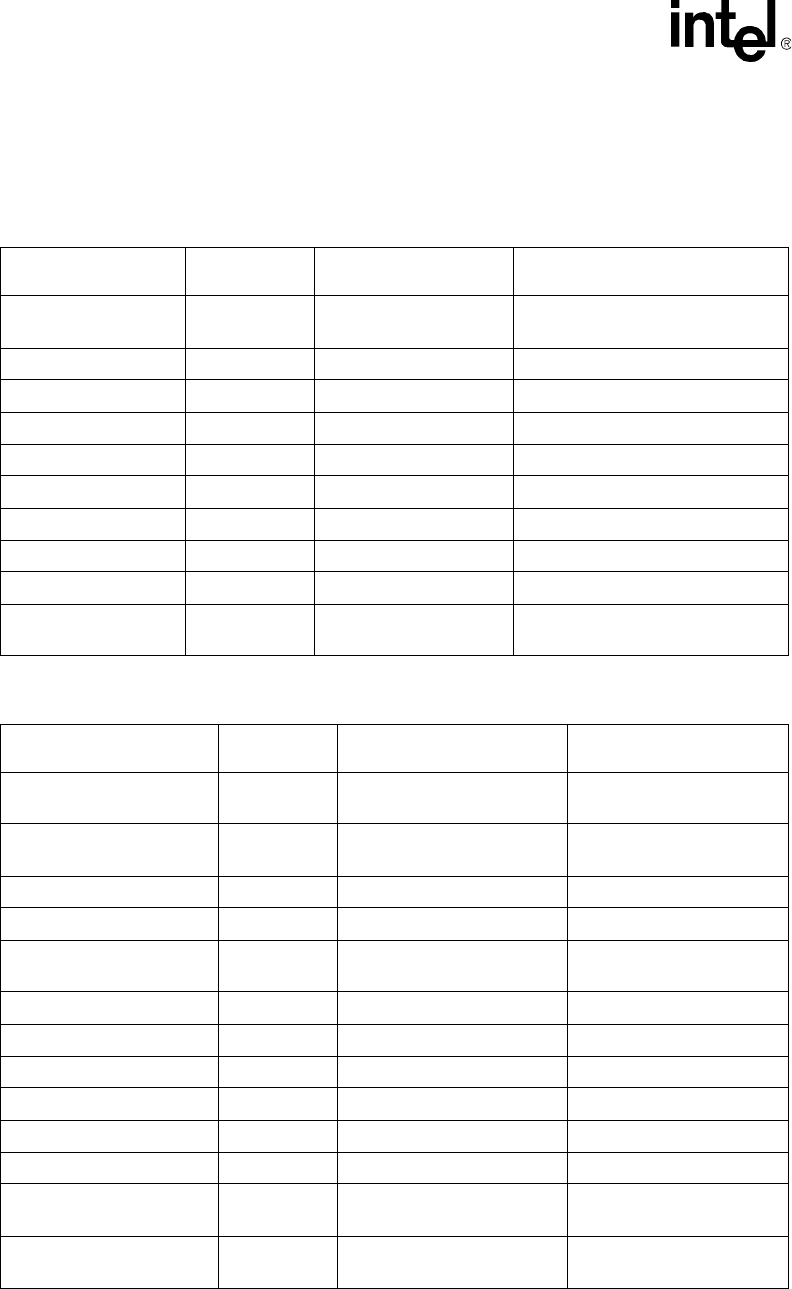

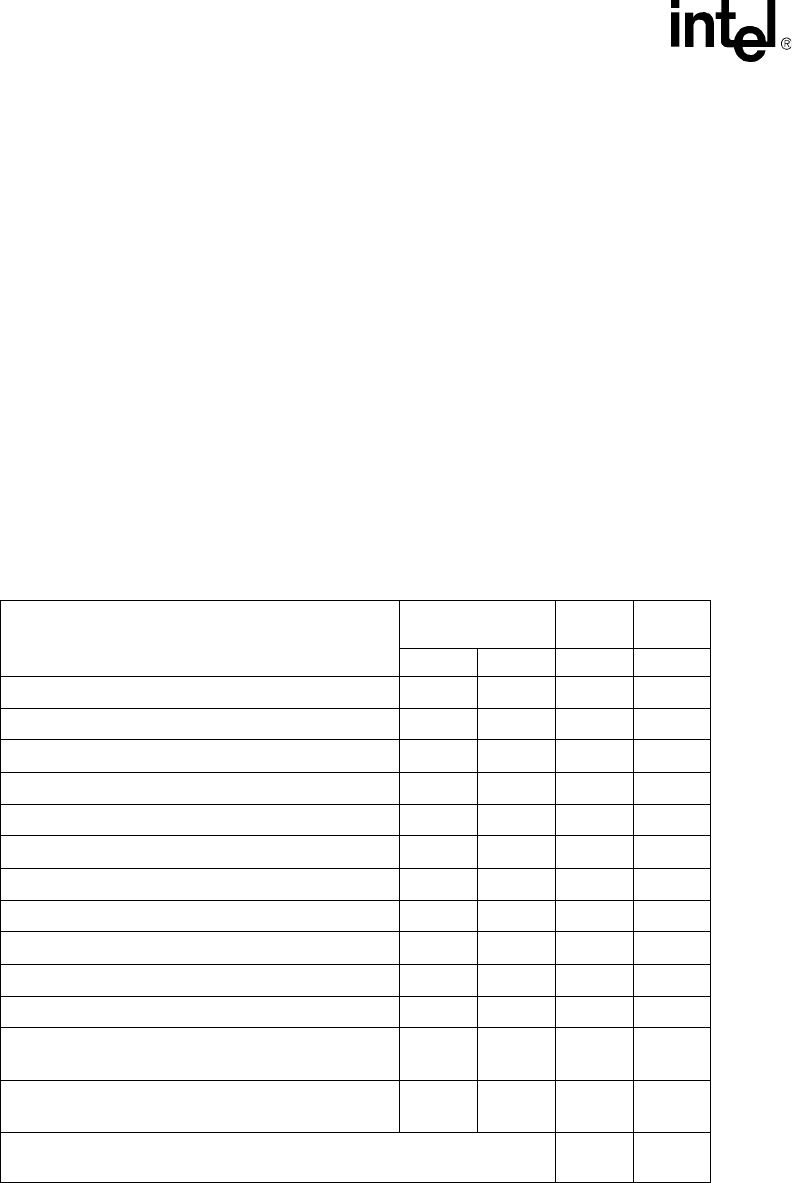

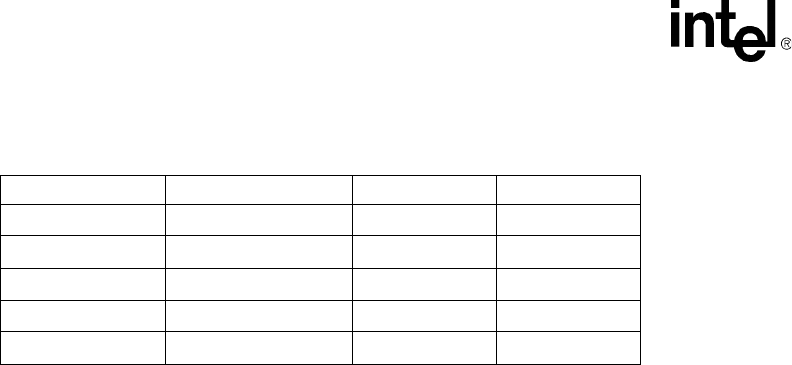

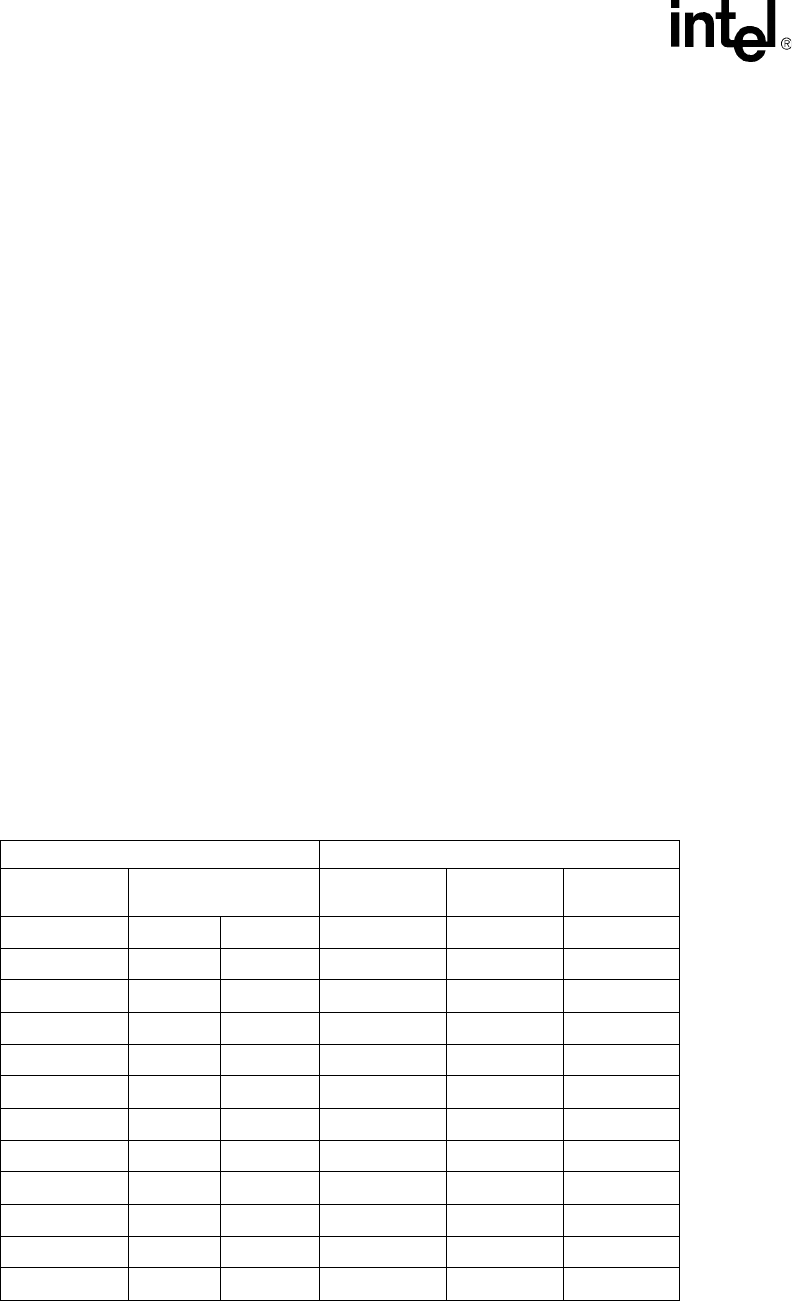

Each of the event management functions applicable to the voice boards are listed in the following

tables. Table 1 lists values that are required by event management functions. Table 2 list values that

are returned for event management functions that are used with voice devices.

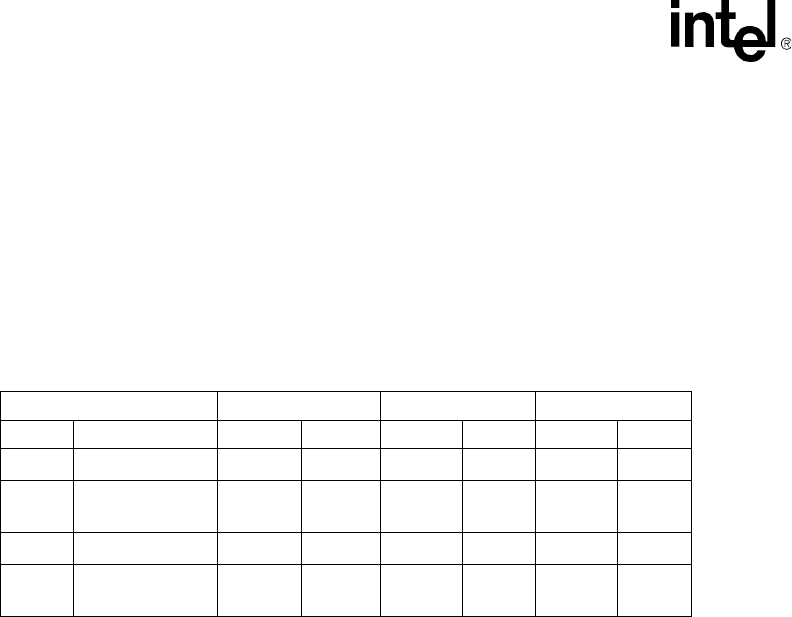

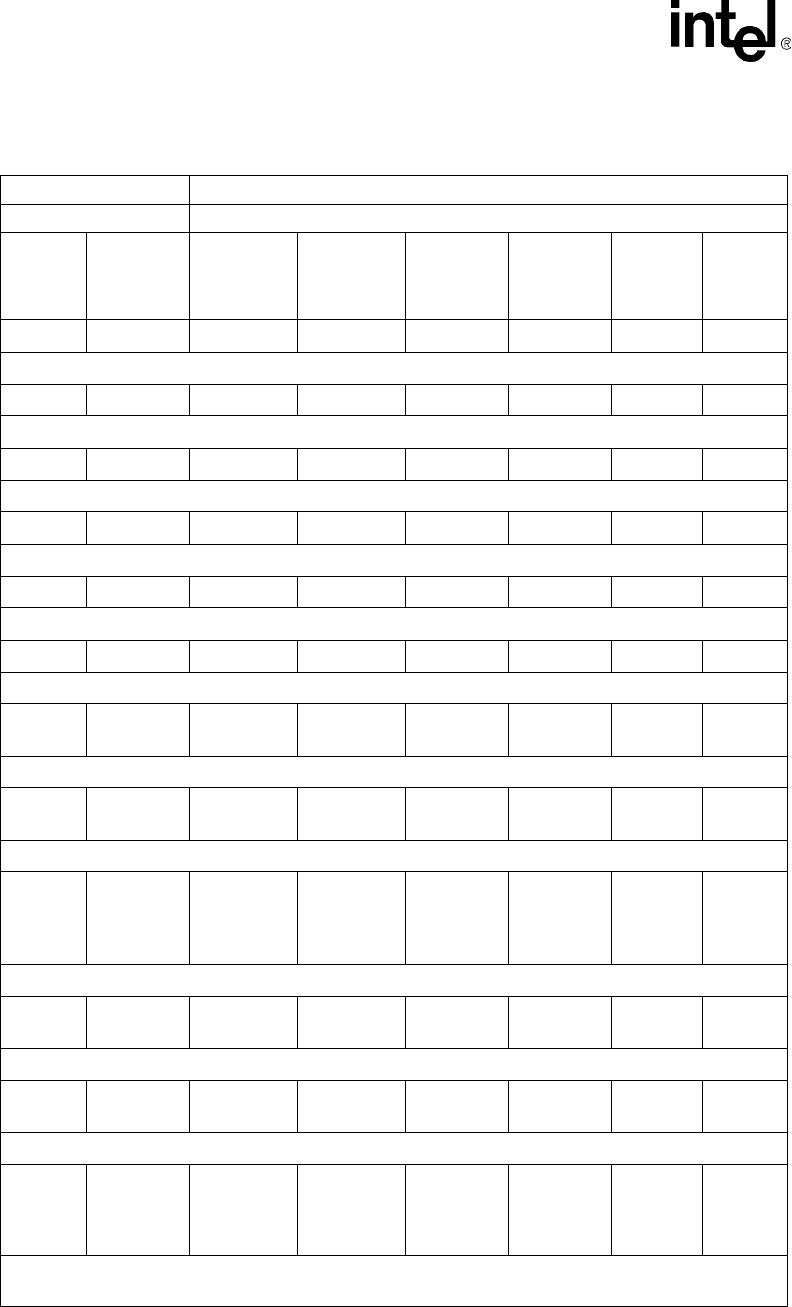

Table 1. Voice Device Inputs for Event Management Functions

Event Management

Function

Voice Device

Input Valid Value Related Voice Functions

sr_enbhdlr( )

Enable event handler

evt_type TDX_PLAY dx_play( )

TDX_PLAYTONE dx_playtone( )

TDX_RECORD dx_rec( )

TDX_GETDIG dx_getdig( ), dx_getdigEx( )

TDX_DIAL dx_dial( )

TDX_CALLP dx_dial( )

TDX_SETHOOK dx_sethook( )

TDX_WINK dx_wink( )

TDX_ERROR All asynchronous functions

sr_dishdlr( )

Disable event handler

evt_type As above As above

Table 2. Voice Device Returns from Event Management Functions

Event Management

Function

Return

Description Returned Value Related Voice Functions

sr_getevtdev( )

Get device handle

device voice device handle

sr_getevttype( )

Get event type

event type TDX_PLAY dx_play( )

TDX_PLAYTONE dx_playtone( )

TDX_RECORD dx_rec( )

TDX_GETDIG dx_getdig( ),

dx_getdigEx( )

TDX_DIAL dx_dial( )

TDX_CALLP dx_dial( )

TDX_CST dx_setevtmsk( )

TDX_SETHOOK dx_sethook( )

TDX_WINK dx_wink( )

TDX_ERROR All asynchronous functions

sr_getevtlen( )

Get event data length

event length sizeof (DX_CST)

sr_getevtdatap( )

Get pointer to event data

event data pointer to DX_CST structure

Voice API for Windows Operating Systems Programming Guide — November 2002 27

Event Handling

28 Voice API for Windows Operating Systems Programming Guide — November 2002

Event Handling

Voice API for Windows Operating Systems Programming Guide — November 2002 29

5

5.Error Handling

This chapter discusses how to handle errors that can occur when running an application.

All voice library functions return a value to indicate success or failure of the function. A return

value of zero or a non-negative number indicates success. A return value of -1 indicates failure.

If a voice library function fails, call the standard attribute functions ATDV_LASTERR( ) and

ATDV_ERRMSGP( ) to determine the reason for failure. For more information on these

functions, see the Standard Runtime Library API Library Reference.

If an extended attribute function fails, two types of errors can be generated. An extended attribute

function that returns a pointer will produce a pointer to the ASCIIZ string “Unknown device” if it

fails. An extended attribute function that does not return a pointer will produce a value of

AT_FAILURE if it fails. Extended attribute functions for the voice library are prefaced with

“ATDX_”.

Notes: 1. The dx_open( ) and dx_close( ) functions are exceptions to the above error handling rules. If

these functions fail, the return code is -1. Use dx_fileerrno( ) to obtain the system error value.

2. If ATDV_LASTERR( ) returns the EDX_SYSTEM error code, an operating system error has

occurred. Use dx_fileerrno( ) to obtain the system error value.

For a list of errors that can be returned by a voice library function, see the Voice API Library

Reference. You can also look up the error codes in the dxxxlib.h file.

30 Voice API for Windows Operating Systems Programming Guide — November 2002

Error Handling

Voice API for Windows Operating Systems Programming Guide — November 2002 31

6

6.Application Development

Guidelines

This chapter provides programming guidelines and techniques for developing an application using

the voice library. The following topics are discussed:

•General Considerations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

•Fixed and Flexible Routing Configurations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 34

•Fixed Routing Configuration Restrictions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 36

•Additional DM3 Considerations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 36

•Using Wink Signaling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 40

6.1 General Considerations

The following considerations apply to all applications written using the voice API:

•Busy and Idle States

•I/O Terminations

•Clearing Structures Before Use

See feature chapters for programming guidelines specific to a feature, such as Call Progress

Analysis, Caller ID, and so on.

6.1.1 Busy and Idle States

The operation of some library functions are dependent on the state of the device when the function

call is made. A device is in an idle state when it is not being used, and in a busy state when it is

dialing, stopped, being configured, or being used for other I/O functions. Idle represents a single

state; busy represents the set of states that a device may be in when it is not idle. State-dependent

functions do not make a distinction between the individual states represented by the term busy.

They only distinguish between idle and busy states.

For more information on categories of functions and their description, see the Voice API Library

Reference.

6.1.2 I/O Terminations

When an I/O function is issued, you must pass a set of termination conditions as one of the function

parameters. Termination conditions are events monitored during the I/O process that will cause an

I/O function to terminate. When the termination condition is met, a termination reason is returned

by ATDX_TERMMSK( ). If the I/O function is running in synchronous mode, the

32 Voice API for Windows Operating Systems Programming Guide — November 2002

Application Development Guidelines

ATDX_TERMMSK( ) function returns a termination reason after the I/O function has completed.

If the I/O function is running in asynchronous mode, the ATDX_TERMMSK( ) function returns a

termination reason after the function termination event has arrived. I/O functions can terminate

under several conditions as described later in this section.

You can predict events that will occur during I/O (such as a digit being received or the call being

disconnected) and set termination conditions accordingly. The flow of control in a voice

application is based on the termination condition. Setting these conditions properly allows you to

build voice applications that can anticipate a caller's actions.

To set the termination conditions, values are placed in fields of a DV_TPT structure. If you set

more than one termination condition, the first one that occurs will terminate the I/O function. The

DV_TPT structures can be configured as a linked list or array, with each DV_TPT specifying a

single terminating condition. For more information on the DV_TPT structure, which is defined in

srllib.h, see the Voice API Library Reference.

The termination conditions are described in the following paragraphs.

byte transfer count

This termination condition applies when playing or recording a file with dx_play( ) or

dx_rec( ). The maximum number of bytes is set in the DX_IOTT structure. This condition will

cause termination if the maximum number of bytes is used before one of the termination

conditions specified in the DV_TPT occurs. For information about setting the number of bytes

in the DX_IOTT, see the Voice API Library Reference.

dx_stopch( ) occurred