VxWorks Application Programmer's Guide, 6.7 Programmers Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 432 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- VxWorks Application Programmer's Guide, 6.7

- Contents

- 1 Overview

- 2 Real-Time Processes

- 2.1 Introduction

- 2.2 About Real-time Processes

- 2.3 Configuring VxWorks For Real-time Processes

- 2.4 Using RTPs Without MMU Support

- 2.5 About VxWorks RTP Virtual Memory Models

- 2.6 Using the Overlapped RTP Virtual Memory Model

- 3 RTP Applications

- 3.1 Introduction

- 3.2 Configuring VxWorks For RTP Applications

- 3.3 Developing RTP Applications

- RTP Applications With Shared Libraries and Plug-Ins

- RTP Applications for the Overlapped Virtual Memory Model

- RTP Applications for UP and SMP Configurations of VxWorks

- Migrating Kernel Applications to RTP Applications

- 3.3.1 RTP Application Structure

- 3.3.2 VxWorks Header Files

- 3.3.3 RTP Application APIs: System Calls and Library Routines

- 3.3.4 Reducing Executable File Size With the strip Facility

- 3.3.5 RTP Applications and Multitasking

- 3.3.6 Checking for Required Kernel Support

- 3.3.7 Using Hook Routines

- 3.3.8 Developing C++ Applications

- 3.3.9 Using POSIX Facilities

- 3.3.10 Building RTP Applications

- 3.4 Developing Static Libraries, Shared Libraries and Plug-Ins

- 3.5 Creating and Using Shared Data Regions

- 3.6 Executing RTP Applications

- Caveat With Regard to Stripped Executables

- Starting an RTP Application

- Stopping an RTP Application

- Storing Application Executables

- 3.6.1 Running Applications Interactively

- 3.6.2 Running Applications Automatically

- 3.6.3 Spawning Tasks and Executing Routines in an RTP Application

- 3.6.4 Applications and Symbol Registration

- 3.7 Bundling RTP Applications in a System using ROMFS

- 4 Static Libraries, Shared Libraries, and Plug-Ins

- 4.1 Introduction

- 4.2 About Static Libraries, Shared Libraries, and Plug-ins

- 4.3 Additional Documentation

- 4.4 Configuring VxWorks for Shared Libraries and Plug-ins

- 4.5 Common Development Issues: Initialization and Termination

- 4.6 Common Development Facilities

- 4.7 Developing Static Libraries

- 4.8 Developing Shared Libraries

- 4.8.1 About Dynamic Linking

- 4.8.2 Configuring VxWorks for Shared Libraries

- 4.8.3 Initialization and Termination

- 4.8.4 About Shared Library Names and ELF Records

- 4.8.5 Creating Shared Object Names for Shared Libraries

- 4.8.6 Using Different Versions of Shared Libraries

- 4.8.7 Locating and Loading Shared Libraries at Run-time

- 4.8.8 Using Lazy Binding With Shared Libraries

- 4.8.9 Developing RTP Applications That Use Shared Libraries

- 4.8.10 Getting Runtime Information About Shared Libraries

- 4.8.11 Debugging Problems With Shared Library Use

- 4.8.12 Working With Shared Libraries From a Windows Host

- 4.9 Developing Plug-Ins

- 4.10 Using the VxWorks Run-time C Shared Library libc.so

- 5 C++ Development

- 6 Multitasking

- 6.1 Introduction

- 6.2 Tasks and Multitasking

- 6.3 Task Scheduling

- 6.4 Task Creation and Management

- 6.5 Task Error Status: errno

- 6.6 Task Exception Handling

- 6.7 Shared Code and Reentrancy

- 6.8 Intertask and Interprocess Communication

- 6.9 Inter-Process Communication With Public Objects

- 6.10 Object Ownership and Resource Reclamation

- 6.11 Shared Data Structures

- 6.12 Mutual Exclusion

- 6.13 Semaphores

- 6.14 Message Queues

- 6.15 Pipes

- 6.16 VxWorks Events

- 6.17 Message Channels

- 6.18 Network Communication

- 6.19 Signals

- 6.20 Timers

- 7 POSIX Facilities

- 7.1 Introduction

- 7.2 Configuring VxWorks with POSIX Facilities

- 7.3 General POSIX Support

- 7.4 Standard C Library: libc

- 7.5 POSIX Header Files

- 7.6 POSIX Namespace

- 7.7 POSIX Process Privileges

- 7.8 POSIX Process Support

- 7.9 POSIX Clocks and Timers

- 7.10 POSIX Asynchronous I/O

- 7.11 POSIX Advisory File Locking

- 7.12 POSIX Page-Locking Interface

- 7.13 POSIX Threads

- 7.13.1 POSIX Thread Stack Guard Zones

- 7.13.2 POSIX Thread Attributes

- 7.13.3 VxWorks-Specific Pthread Attributes

- 7.13.4 Specifying Attributes when Creating Pthreads

- 7.13.5 POSIX Thread Creation and Management

- 7.13.6 POSIX Thread Attribute Access

- 7.13.7 POSIX Thread Private Data

- 7.13.8 POSIX Thread Cancellation

- 7.14 POSIX Thread Mutexes and Condition Variables

- 7.15 POSIX and VxWorks Scheduling

- 7.16 POSIX Semaphores

- 7.17 POSIX Message Queues

- 7.18 POSIX Signals

- 7.19 POSIX Memory Management

- 7.20 POSIX Trace

- 8 Memory Management

- 9 I/O System

- 9.1 Introduction

- 9.2 Configuring VxWorks With I/O Facilities

- 9.3 Files, Devices, and Drivers

- 9.4 Basic I/O

- 9.4.1 File Descriptors

- 9.4.2 Standard Input, Standard Output, and Standard Error

- 9.4.3 Standard I/O Redirection

- 9.4.4 Open and Close

- 9.4.5 Create and Remove

- 9.4.6 Read and Write

- 9.4.7 File Truncation

- 9.4.8 I/O Control

- 9.4.9 Pending on Multiple File Descriptors with select( )

- 9.4.10 POSIX File System Routines

- 9.5 Buffered I/O: stdio

- 9.6 Other Formatted I/O

- 9.7 Asynchronous Input/Output

- 9.8 Devices in VxWorks

- 10 Local File Systems

- 10.1 Introduction

- 10.2 File System Monitor

- 10.3 Virtual Root File System: VRFS

- 10.4 Highly Reliable File System: HRFS

- 10.4.1 Configuring VxWorks for HRFS

- 10.4.2 Configuring HRFS

- 10.4.3 HRFS and POSIX PSE52

- 10.4.4 Creating an HRFS File System

- 10.4.5 Transactional Operations and Commit Policies

- 10.4.6 Configuring Transaction Points at Runtime

- 10.4.7 File Access Time Stamps

- 10.4.8 Maximum Number of Files and Directories

- 10.4.9 Working with Directories

- 10.4.10 Working with Files

- 10.4.11 I/O Control Functions Supported by HRFS

- 10.4.12 Crash Recovery and Volume Consistency

- 10.4.13 File Management and Full Devices

- 10.5 MS-DOS-Compatible File System: dosFs

- 10.5.1 Configuring VxWorks for dosFs

- 10.5.2 Configuring dosFs

- 10.5.3 Creating a dosFs File System

- 10.5.4 Working with Volumes and Disks

- 10.5.5 Working with Directories

- 10.5.6 Working with Files

- 10.5.7 Disk Space Allocation Options

- 10.5.8 Crash Recovery and Volume Consistency

- 10.5.9 I/O Control Functions Supported by dosFsLib

- 10.5.10 Booting from a Local dosFs File System Using SCSI

- 10.6 Raw File System: rawFs

- 10.7 CD-ROM File System: cdromFs

- 10.8 Read-Only Memory File System: ROMFS

- 10.9 Target Server File System: TSFS

- 11 Error Detection and Reporting

- A Kernel to RTP Application Migration

- A.1 Introduction

- A.2 Migrating Kernel Applications to Processes

- A.2.1 Reducing Library Size

- A.2.2 Limiting Process Scope

- A.2.3 Using C++ Initialization and Finalization Code

- A.2.4 Eliminating Hardware Access

- A.2.5 Eliminating Interrupt Contexts In Processes

- A.2.6 Redirecting I/O

- A.2.7 Process and Task API Differences

- A.2.8 Semaphore Differences

- A.2.9 POSIX Signal Differences

- A.2.10 Networking Issues

- A.2.11 Header File Differences

- A.3 Differences in Kernel and RTP APIs

- Index

VxWorks

APPLICATION PROGRAMMER'S GUIDE

®

6.7

VxWorks Application Programmer's Guide, 6.7

Copyright © 2008 Wind River Systems, Inc.

All rights reserved. No part of this publication may be reproduced or transmitted in any

form or by any means without the prior written permission of Wind River Systems, Inc.

Wind River, Tornado, and VxWorks are registered trademarks of Wind River Systems, Inc.

The Wind River logo is a trademark of Wind River Systems, Inc. Any third-party

trademarks referenced are the property of their respective owners. For further information

regarding Wind River trademarks, please see:

www.windriver.com/company/terms/trademark.html

This product may include software licensed to Wind River by third parties. Relevant

notices (if any) are provided in your product installation at the following location:

installDir/product_name/3rd_party_licensor_notice.pdf.

Wind River may refer to third-party documentation by listing publications or providing

links to third-party Web sites for informational purposes. Wind River accepts no

responsibility for the information provided in such third-party documentation.

Corporate Headquarters

Wind River

500 Wind River Way

Alameda, CA 94501-1153

U.S.A.

Toll free (U.S.A.): 800-545-WIND

Telephone: 510-748-4100

Facsimile: 510-749-2010

For additional contact information, see the Wind River Web site:

www.windriver.com

For information on how to contact Customer Support, see:

www.windriver.com/support

VxWorks

Application Programmer's Guide

6.7

17 Nov 08

Part #: DOC-16304-ND-00

iii

Contents

1 Overview ............................................................................................... 1

1.1 Introduction ............................................................................................................. 1

1.2 Related Documentation Resources ..................................................................... 2

1.3 VxWorks Configuration and Build ..................................................................... 3

2 Real-Time Processes ........................................................................... 5

2.1 Introduction ............................................................................................................. 6

2.2 About Real-time Processes ................................................................................... 7

2.2.1 RTPs and Scheduling ............................................................................... 8

2.2.2 RTP Creation ............................................................................................. 8

2.2.3 RTP Termination ...................................................................................... 10

2.2.4 RTPs and Memory ................................................................................... 10

Virtual Memory Models .......................................................................... 11

Memory Protection .................................................................................. 11

2.2.5 RTPs and Tasks ......................................................................................... 11

Numbers of Tasks and RTPs .................................................................. 12

Initial Task in an RTP .............................................................................. 12

RTP Tasks and Memory .......................................................................... 12

VxWorks

Application Programmer's Guide, 6.7

iv

2.2.6 RTPs and Inter-Process Communication .............................................. 13

2.2.7 RTPs, Inheritance, Zombies, and Resource Reclamation ................... 13

Inheritance ................................................................................................. 13

Zombie Processes ..................................................................................... 14

Resource Reclamation .............................................................................. 14

2.2.8 RTPs and Environment Variables .......................................................... 15

Setting Environment Variables From Outside a Process .................... 15

Setting Environment Variables From Within a Process ..................... 16

2.2.9 RTPs and POSIX ....................................................................................... 16

POSIX PSE52 Support .............................................................................. 16

2.3 Configuring VxWorks For Real-time Processes .............................................. 17

2.3.1 Basic RTP Support .................................................................................... 17

2.3.2 MMU Support for RTPs .......................................................................... 18

2.3.3 Additional Component Options ............................................................ 19

2.3.4 Configuration and Build Facilities ......................................................... 20

2.4 Using RTPs Without MMU Support .................................................................. 20

Configuation With Process Support and Without an MMU ............. 22

2.5 About VxWorks RTP Virtual Memory Models ............................................... 23

2.5.1 Flat RTP Virtual Memory Model .......................................................... 23

2.5.2 Overlapped RTP Virtual Memory Model ............................................ 24

2.6 Using the Overlapped RTP Virtual Memory Model ...................................... 26

2.6.1 About User Regions and the RTP Code Region ................................. 26

User Regions of Virtual Memory ........................................................... 27

RTP Code Region in Virtual Memory .................................................. 27

2.6.2 Configuring VxWorks for Overlapped RTP Virtual Memory .......... 29

Getting Information About User Regions ............................................ 29

Identifying the RTP Code Region .......................................................... 31

Setting Configuration Parameters for the RTP Code Region ............ 33

Contents

v

2.6.3 Using RTP Applications With Overlapped RTP Virtual Memory ... 35

Building Absolutely-Linked RTP Executables ..................................... 35

Stripping Absolutely-Linked RTP Executables .................................. 36

Executing Absolutely-Linked RTP Executables ................................. 37

Executing Relocatable RTP Executables ............................................... 38

3 RTP Applications ................................................................................. 39

3.1 Introduction ............................................................................................................. 39

3.2 Configuring VxWorks For RTP Applications .................................................. 40

3.3 Developing RTP Applications ............................................................................. 40

RTP Applications With Shared Libraries and Plug-Ins ...................... 41

RTP Applications for the Overlapped Virtual Memory Model ........ 42

RTP Applications for UP and SMP Configurations of VxWorks ...... 42

Migrating Kernel Applications to RTP Applications .......................... 42

3.3.1 RTP Application Structure ...................................................................... 42

3.3.2 VxWorks Header Files ............................................................................. 43

POSIX Header Files .................................................................................. 44

VxWorks Header File: vxWorks.h ......................................................... 44

Other VxWorks Header Files ................................................................. 44

ANSI Header Files ................................................................................... 44

ANSI C++ Header Files ........................................................................... 45

Compiler -I Flag ........................................................................................ 45

VxWorks Nested Header Files ............................................................... 45

VxWorks Private Header Files ............................................................... 46

3.3.3 RTP Application APIs: System Calls and Library Routines .............. 46

VxWorks System Calls ............................................................................ 46

VxWorks Libraries ................................................................................... 47

Dinkum C and C++ Libraries ................................................................. 48

Custom Libraries ...................................................................................... 48

API Documentation ................................................................................. 48

3.3.4 Reducing Executable File Size With the strip Facility ........................ 48

3.3.5 RTP Applications and Multitasking ...................................................... 49

3.3.6 Checking for Required Kernel Support ................................................ 49

3.3.7 Using Hook Routines ............................................................................... 50

VxWorks

Application Programmer's Guide, 6.7

vi

3.3.8 Developing C++ Applications ................................................................ 50

3.3.9 Using POSIX Facilities ............................................................................. 50

3.3.10 Building RTP Applications ..................................................................... 50

3.4 Developing Static Libraries, Shared Libraries and Plug-Ins ......................... 50

3.5 Creating and Using Shared Data Regions ......................................................... 51

3.5.1 Configuring VxWorks for Shared Data Regions ................................. 52

3.5.2 Creating Shared Data Regions ............................................................... 52

3.5.3 Accessing Shared Data Regions ............................................................. 53

3.5.4 Deleting Shared Data Regions ................................................................ 53

3.6 Executing RTP Applications ................................................................................ 54

Caveat With Regard to Stripped Executables ...................................... 54

Starting an RTP Application ................................................................... 55

Stopping an RTP Application ................................................................. 55

Storing Application Executables ............................................................ 56

3.6.1 Running Applications Interactively ...................................................... 57

Starting Applications ............................................................................... 57

Terminating Applications ....................................................................... 58

3.6.2 Running Applications Automatically ................................................... 58

Startup Facility Options .......................................................................... 59

Application Startup String Syntax ......................................................... 60

Specifying Applications with a Startup Configuration Parameter ... 61

Specifying Applications with a Boot Loader Parameter .................... 62

Specifying Applications with a VxWorks Shell Script ........................ 63

Specifying Applications with usrRtpAppInit( ) .................................. 64

3.6.3 Spawning Tasks and Executing Routines in an RTP Application .... 65

3.6.4 Applications and Symbol Registration ................................................. 65

3.7 Bundling RTP Applications in a System using ROMFS ................................ 66

3.7.1 Configuring VxWorks with ROMFS ..................................................... 67

3.7.2 Building a System With ROMFS and Applications ............................ 67

3.7.3 Accessing Files in ROMFS ....................................................................... 67

Contents

vii

3.7.4 Using ROMFS to Start Applications Automatically ........................... 68

4 Static Libraries, Shared Libraries, and Plug-Ins ............................... 69

4.1 Introduction ............................................................................................................. 70

4.2 About Static Libraries, Shared Libraries, and Plug-ins .................................. 70

Advantages and Disadvantages of Shared Libraries and Plug-Ins .. 71

4.3 Additional Documentation .................................................................................. 73

4.4 Configuring VxWorks for Shared Libraries and Plug-ins ............................. 73

4.5 Common Development Issues: Initialization and Termination ................... 74

4.5.1 Library and Plug-in Initialization .......................................................... 74

4.5.2 C++ Initialization ..................................................................................... 76

4.5.3 Handling Initialization Failures ............................................................. 76

4.5.4 Shared Library and Plug-in Termination ............................................. 77

Using Cleanup Routines ......................................................................... 77

4.6 Common Development Facilities ........................................................................ 78

4.7 Developing Static Libraries .................................................................................. 78

4.7.1 Initialization and Termination ............................................................... 78

4.8 Developing Shared Libraries ............................................................................... 79

4.8.1 About Dynamic Linking ......................................................................... 79

Dynamic Linker ........................................................................................ 79

Position Independent Code: PIC ............................................................ 80

4.8.2 Configuring VxWorks for Shared Libraries ......................................... 80

4.8.3 Initialization and Termination ............................................................... 80

4.8.4 About Shared Library Names and ELF Records ................................. 80

4.8.5 Creating Shared Object Names for Shared Libraries .......................... 81

Options for Defining Shared Object Names and Versions ................ 82

Match Shared Object Names and Shared Library File Names .......... 82

VxWorks

Application Programmer's Guide, 6.7

viii

4.8.6 Using Different Versions of Shared Libraries ...................................... 82

4.8.7 Locating and Loading Shared Libraries at Run-time .......................... 83

Specifying Shared Library Locations: Options and Search Order .... 83

Using the LD_LIBRARY_PATH Environment Variable .................... 84

Using the ld.so.conf Configuration File ................................................ 85

Using the ELF RPATH Record ............................................................... 85

Using the Application Directory ............................................................ 86

Pre-loading Shared Libraries .................................................................. 86

4.8.8 Using Lazy Binding With Shared Libraries .......................................... 87

4.8.9 Developing RTP Applications That Use Shared Libraries ................. 88

4.8.10 Getting Runtime Information About Shared Libraries ....................... 88

4.8.11 Debugging Problems With Shared Library Use .................................. 89

Shared Library Not Found ...................................................................... 89

Incorrectly Started Application .............................................................. 90

Using readelf to Examine Dynamic ELF Files ...................................... 90

4.8.12 Working With Shared Libraries From a Windows Host .................... 92

Using NFS .................................................................................................. 93

Installing NFS on Windows .................................................................... 93

Configuring VxWorks With NFS ........................................................... 93

Testing the NFS Connection ................................................................... 94

4.9 Developing Plug-Ins .............................................................................................. 94

4.9.1 Configuring VxWorks for Plug-Ins ....................................................... 95

4.9.2 Initialization and Termination ............................................................... 95

4.9.3 Developing RTP Applications That Use Plug-Ins ............................... 95

Code Requirements .................................................................................. 95

Build Requirements ................................................................................. 96

Locating Plug-Ins at Run-time ............................................................... 96

Using Lazy Binding With Plug-ins ........................................................ 96

Example of Dynamic Linker API Use ................................................... 97

Example Application Using a Plug-In ................................................... 97

Routines for Managing Plug-Ins ............................................................ 99

4.9.4 Debugging Plug-Ins ................................................................................. 99

4.10 Using the VxWorks Run-time C Shared Library libc.so ................................. 100

Contents

ix

5 C++ Development ................................................................................. 101

5.1 Introduction ............................................................................................................. 101

5.2 C++ Code Requirements ....................................................................................... 102

5.3 C++ Compiler Differences ................................................................................... 102

5.3.1 Template Instantiation ............................................................................. 103

5.3.2 Run-Time Type Information ................................................................... 104

5.4 Namespaces ............................................................................................................. 104

5.5 C++ Demo Example ............................................................................................... 105

6 Multitasking .......................................................................................... 107

6.1 Introduction ............................................................................................................. 109

6.2 Tasks and Multitasking ....................................................................................... 110

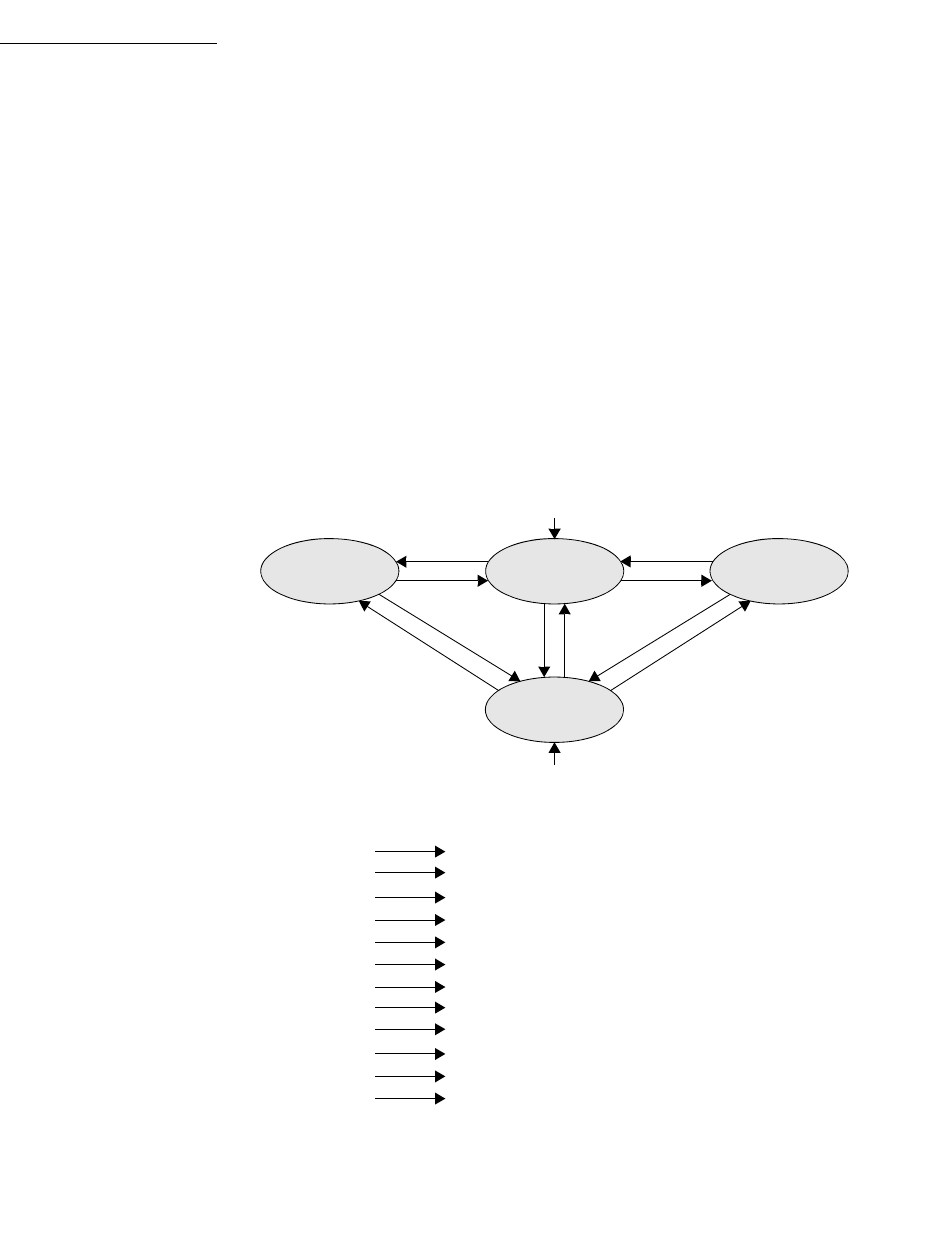

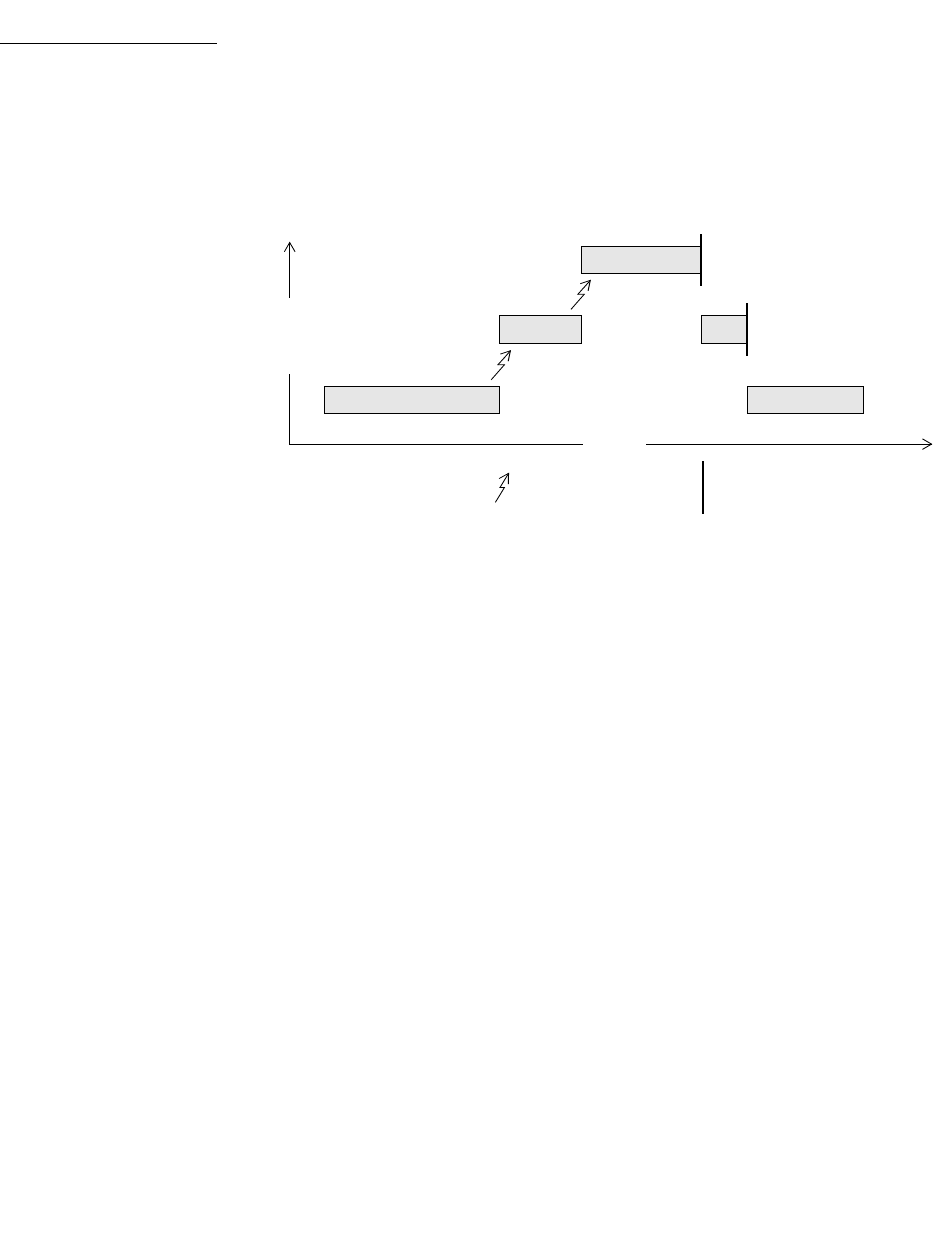

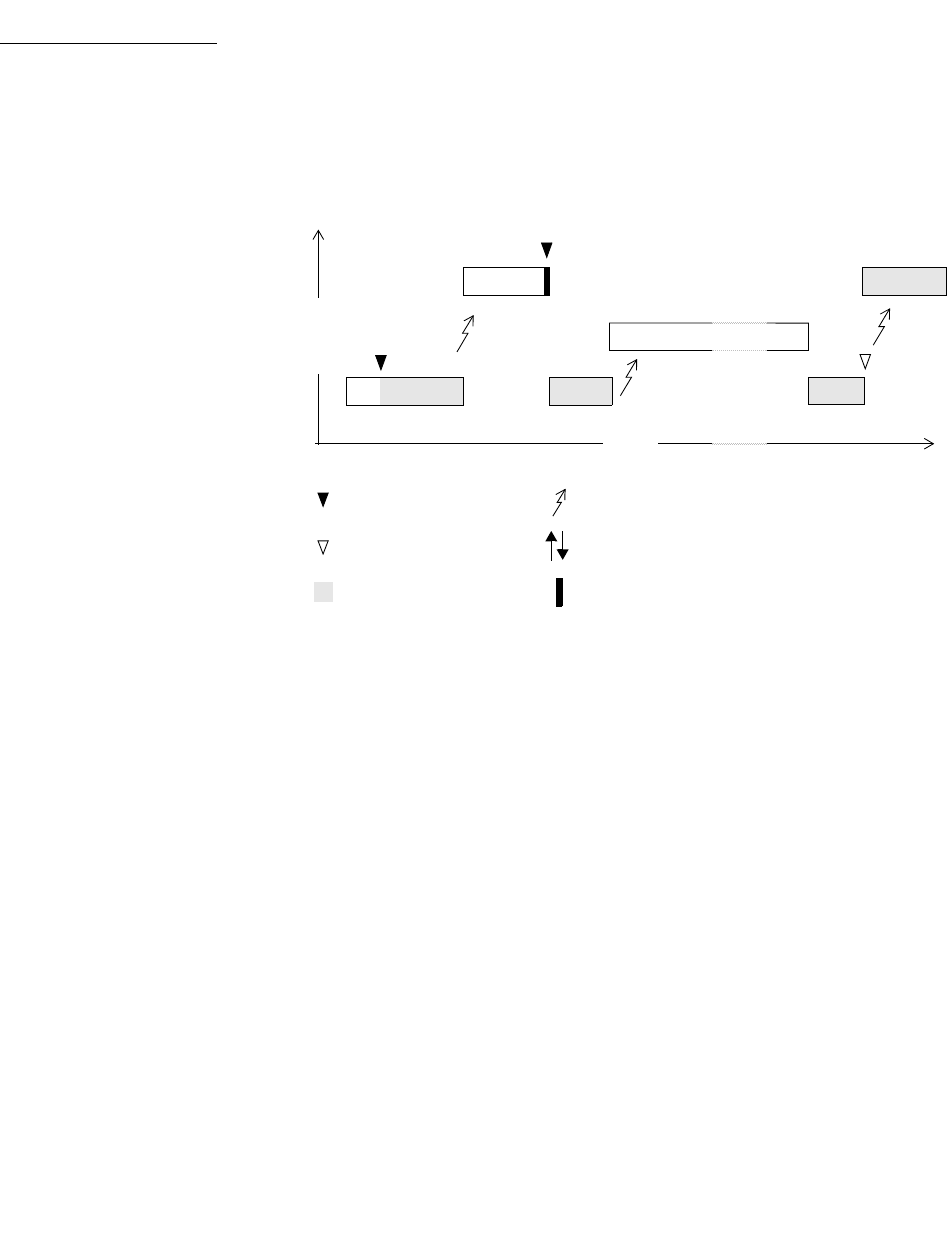

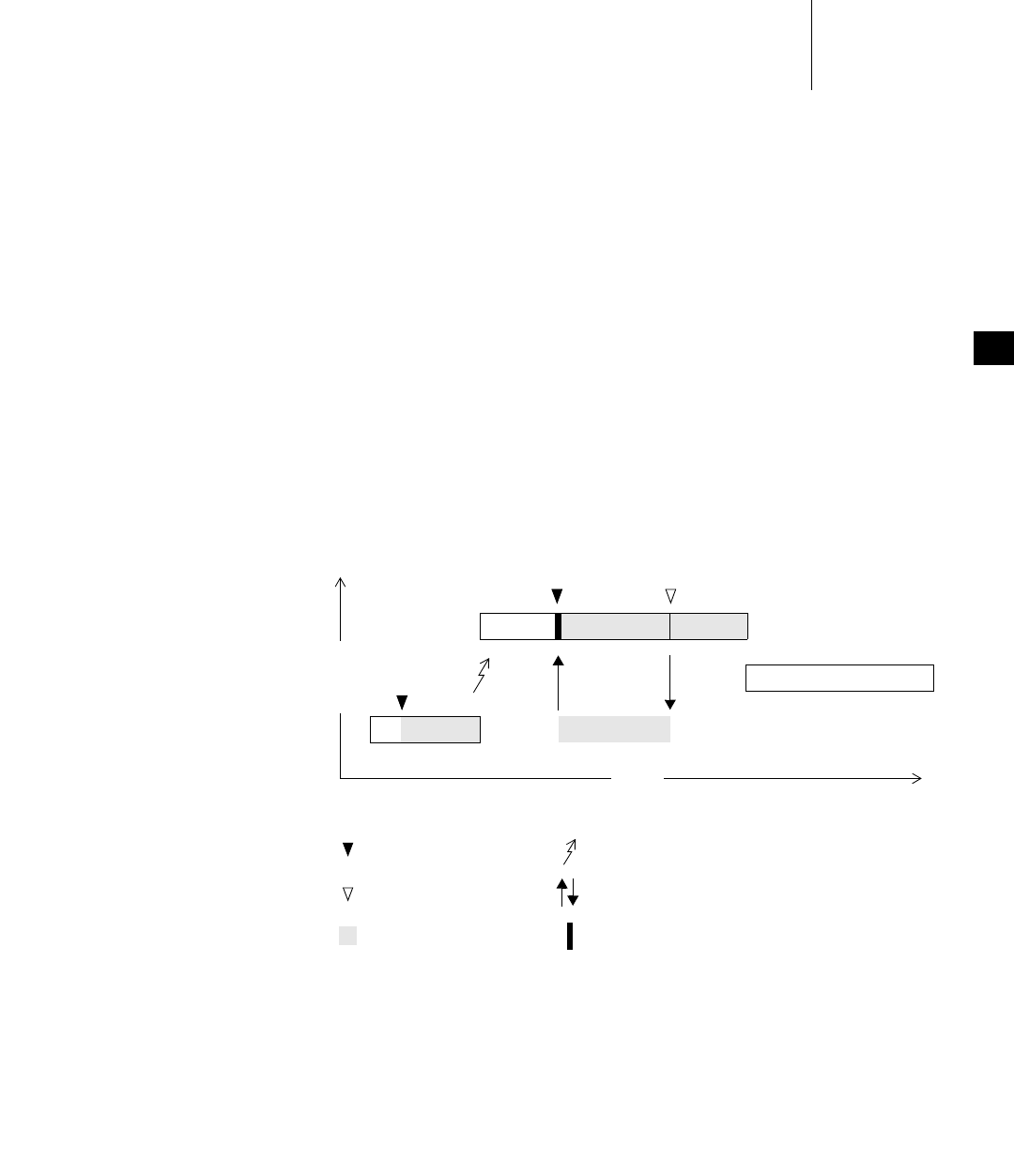

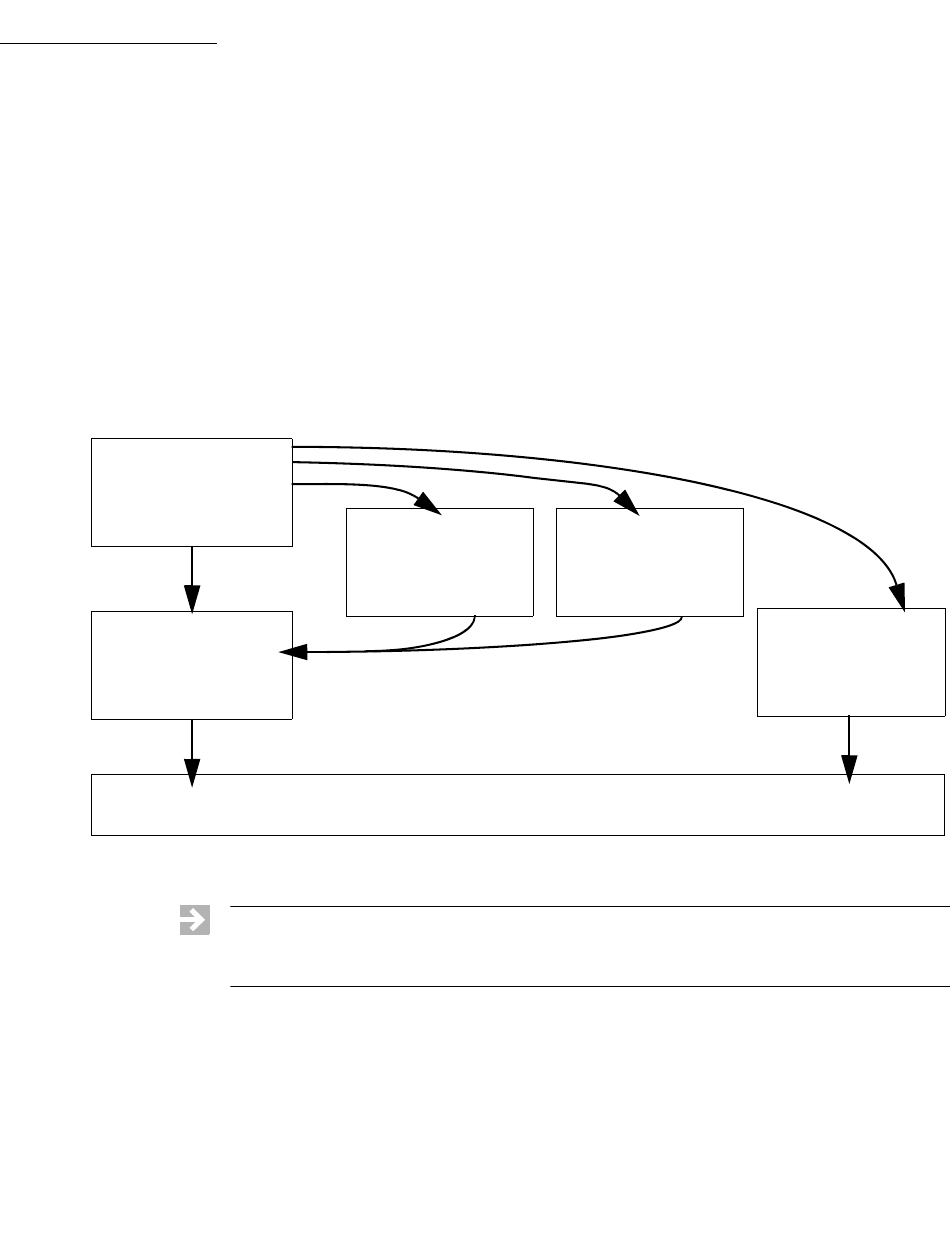

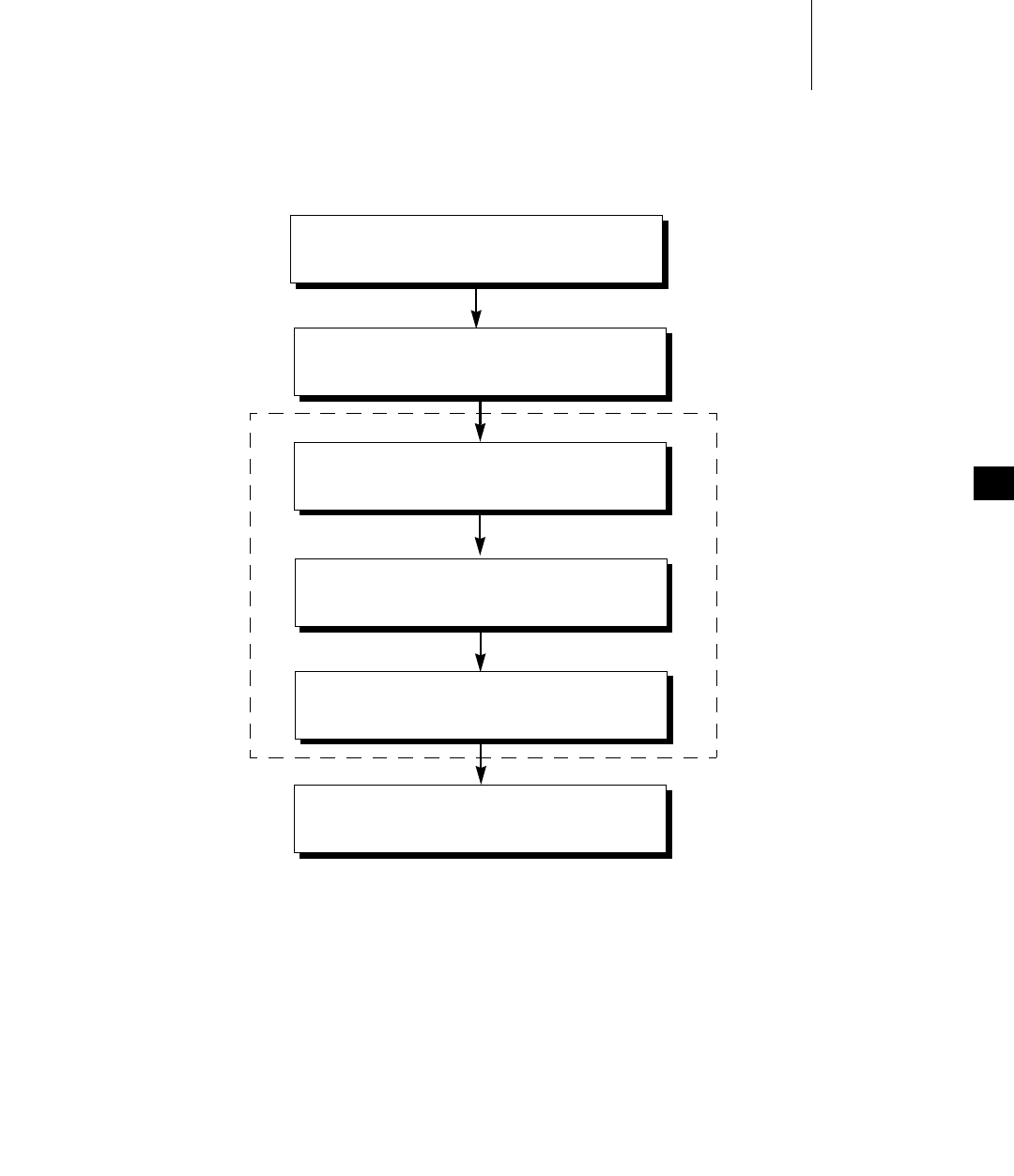

6.2.1 Task States and Transitions .................................................................... 111

Tasks States and State Symbols .............................................................. 112

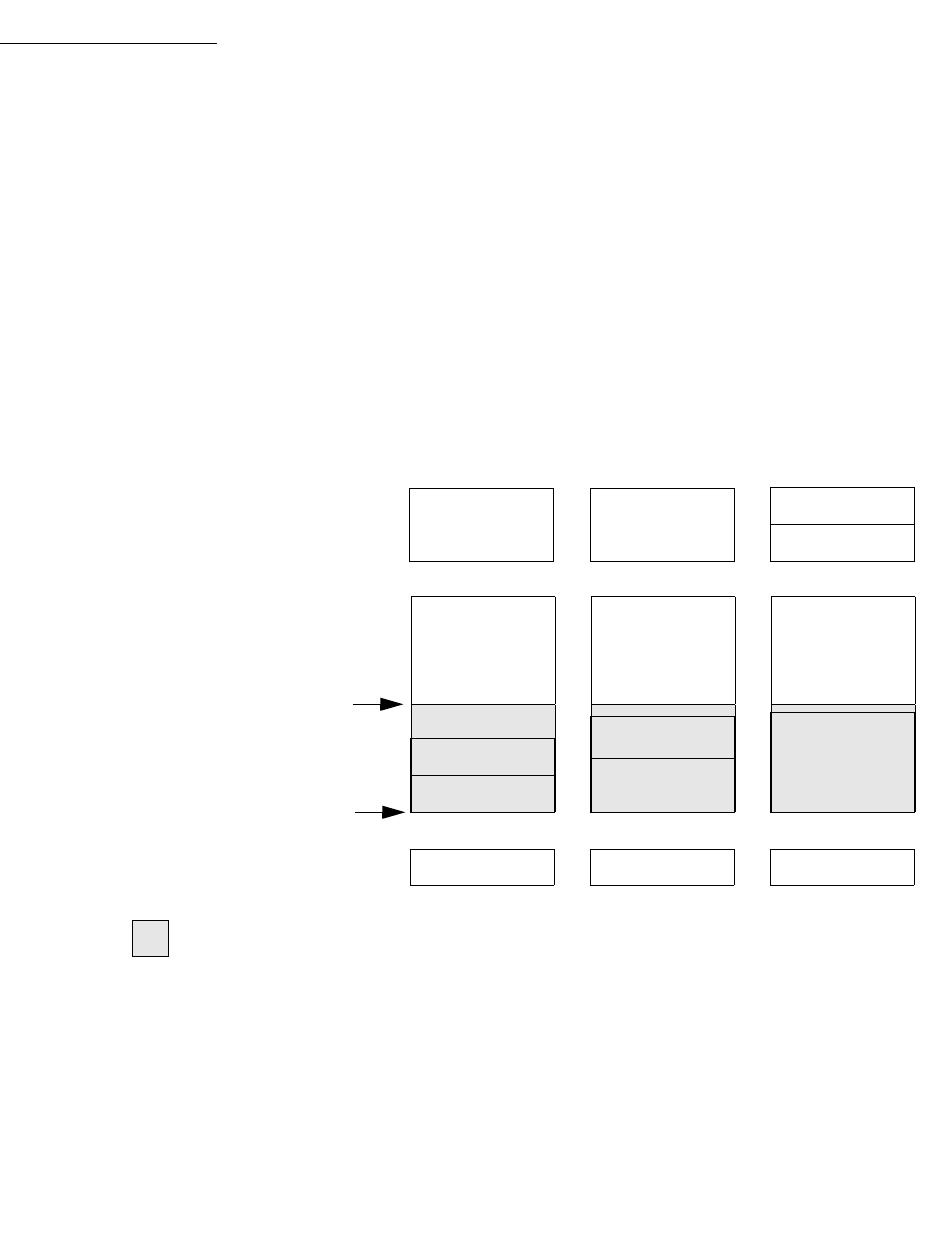

Illustration of Basic Task State Transitions ........................................... 113

6.3 Task Scheduling ..................................................................................................... 115

6.3.1 Task Priorities ........................................................................................... 115

6.3.2 Task Scheduling Control ......................................................................... 115

Task Priority .............................................................................................. 116

Preemption Locks ..................................................................................... 116

6.3.3 VxWorks Traditional Scheduler ............................................................. 117

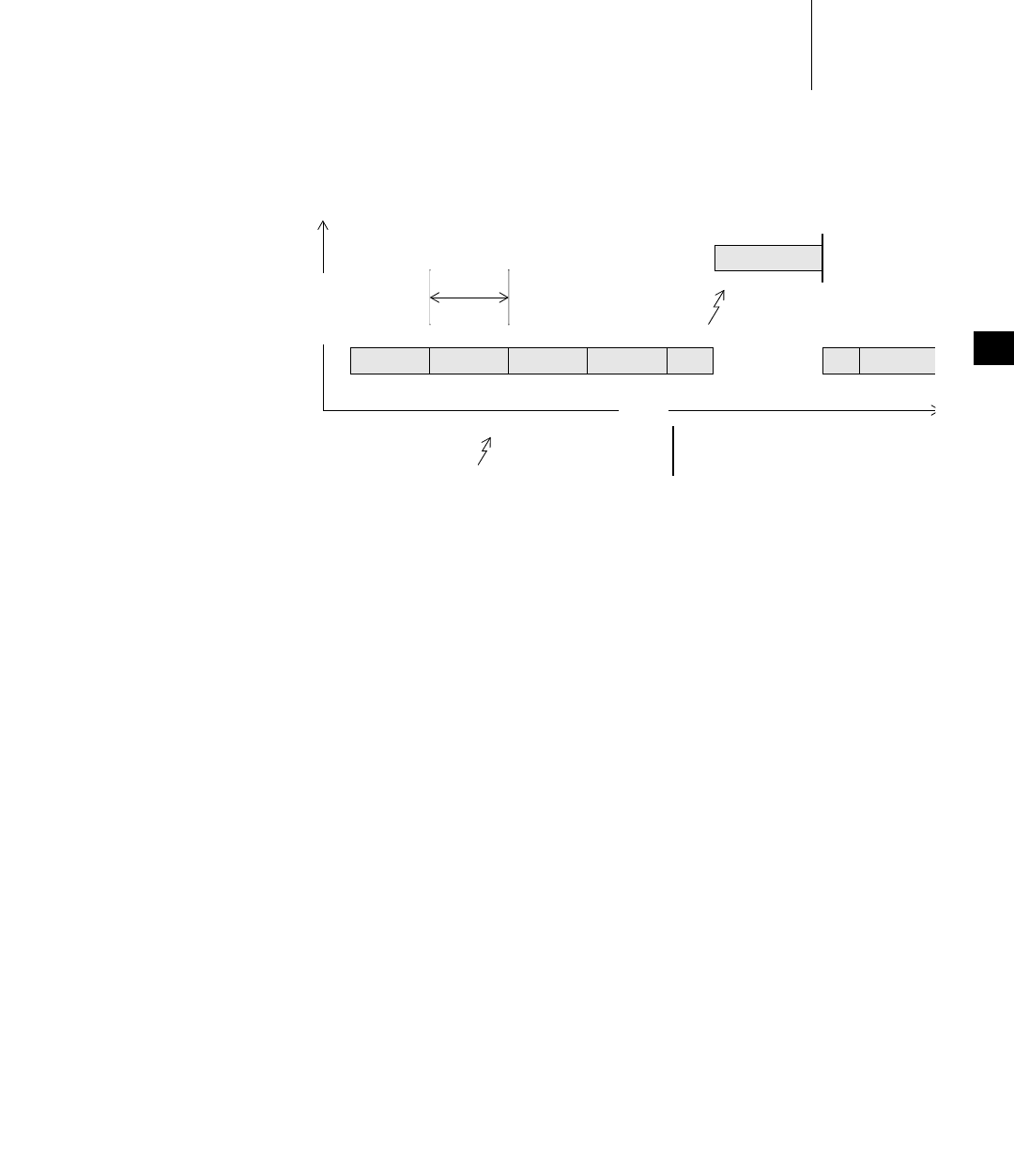

Priority-Based Preemptive Scheduling ................................................. 117

Scheduling and the Ready Queue ......................................................... 118

Round-Robin Scheduling ........................................................................ 119

6.4 Task Creation and Management ......................................................................... 121

6.4.1 Task Creation and Activation ................................................................. 121

6.4.2 Task Names and IDs ................................................................................ 122

VxWorks

Application Programmer's Guide, 6.7

x

6.4.3 Inter-Process Communication With Public Tasks ............................... 124

6.4.4 Task Creation Options ............................................................................. 124

6.4.5 Task Stack .................................................................................................. 125

Task Stack Protection ............................................................................... 126

6.4.6 Task Information ...................................................................................... 127

6.4.7 Task Deletion and Deletion Safety ......................................................... 128

6.4.8 Task Execution Control ........................................................................... 129

6.4.9 Tasking Extensions: Hook Routines ...................................................... 131

6.5 Task Error Status: errno ......................................................................................... 132

6.5.1 A Separate errno Value for Each Task .................................................. 133

6.5.2 Error Return Convention ........................................................................ 133

6.5.3 Assignment of Error Status Values ........................................................ 133

6.6 Task Exception Handling ...................................................................................... 134

6.7 Shared Code and Reentrancy ............................................................................... 134

6.7.1 Dynamic Stack Variables ......................................................................... 136

6.7.2 Guarded Global and Static Variables .................................................... 136

6.7.3 Task-Specific Variables ........................................................................... 137

Thread-Local Variables: __thread Storage Class ................................. 137

tlsOldLib and Task Variables ................................................................ 138

6.7.4 Multiple Tasks with the Same Main Routine ....................................... 138

6.8 Intertask and Interprocess Communication ...................................................... 139

6.9 Inter-Process Communication With Public Objects ....................................... 140

Creating and Naming Public and Private Objects ............................... 141

6.10 Object Ownership and Resource Reclamation ................................................. 142

6.11 Shared Data Structures .......................................................................................... 142

6.12 Mutual Exclusion .................................................................................................... 143

Contents

xi

6.13 Semaphores ............................................................................................................. 144

6.13.1 Inter-Process Communication With Public Semaphores ................... 145

6.13.2 Semaphore Control .................................................................................. 145

Options for Scalable and Inline Semaphore Routines ........................ 147

Static Instantiation of Semaphores ........................................................ 148

Scalable and Inline Semaphore Take and Give Routines ................... 149

6.13.3 Binary Semaphores .................................................................................. 149

Mutual Exclusion ..................................................................................... 151

Synchronization ........................................................................................ 152

6.13.4 Mutual-Exclusion Semaphores .............................................................. 152

Priority Inversion and Priority Inheritance .......................................... 153

Deletion Safety .......................................................................................... 156

Recursive Resource Access ..................................................................... 156

6.13.5 Counting Semaphores ............................................................................. 157

6.13.6 Read/Write Semaphores ........................................................................ 158

Specification of Read or Write Mode .................................................... 159

Precedence for Write Access Operations .............................................. 160

Read/Write Semaphores and System Performance ........................... 160

6.13.7 Special Semaphore Options .................................................................... 160

Semaphore Timeout ................................................................................. 161

Semaphores and Queueing ..................................................................... 161

Semaphores Interruptible by Signals ................................................... 162

Semaphores and VxWorks Events ......................................................... 162

6.14 Message Queues ..................................................................................................... 162

6.14.1 Inter-Process Communication With Public Message Queues ........... 163

6.14.2 VxWorks Message Queue Routines ...................................................... 164

Message Queue Timeout ......................................................................... 164

Message Queue Urgent Messages ......................................................... 165

Message Queues Interruptible by Signals ............................................ 166

Message Queues and Queuing Options ............................................... 166

6.14.3 Displaying Message Queue Attributes ................................................. 166

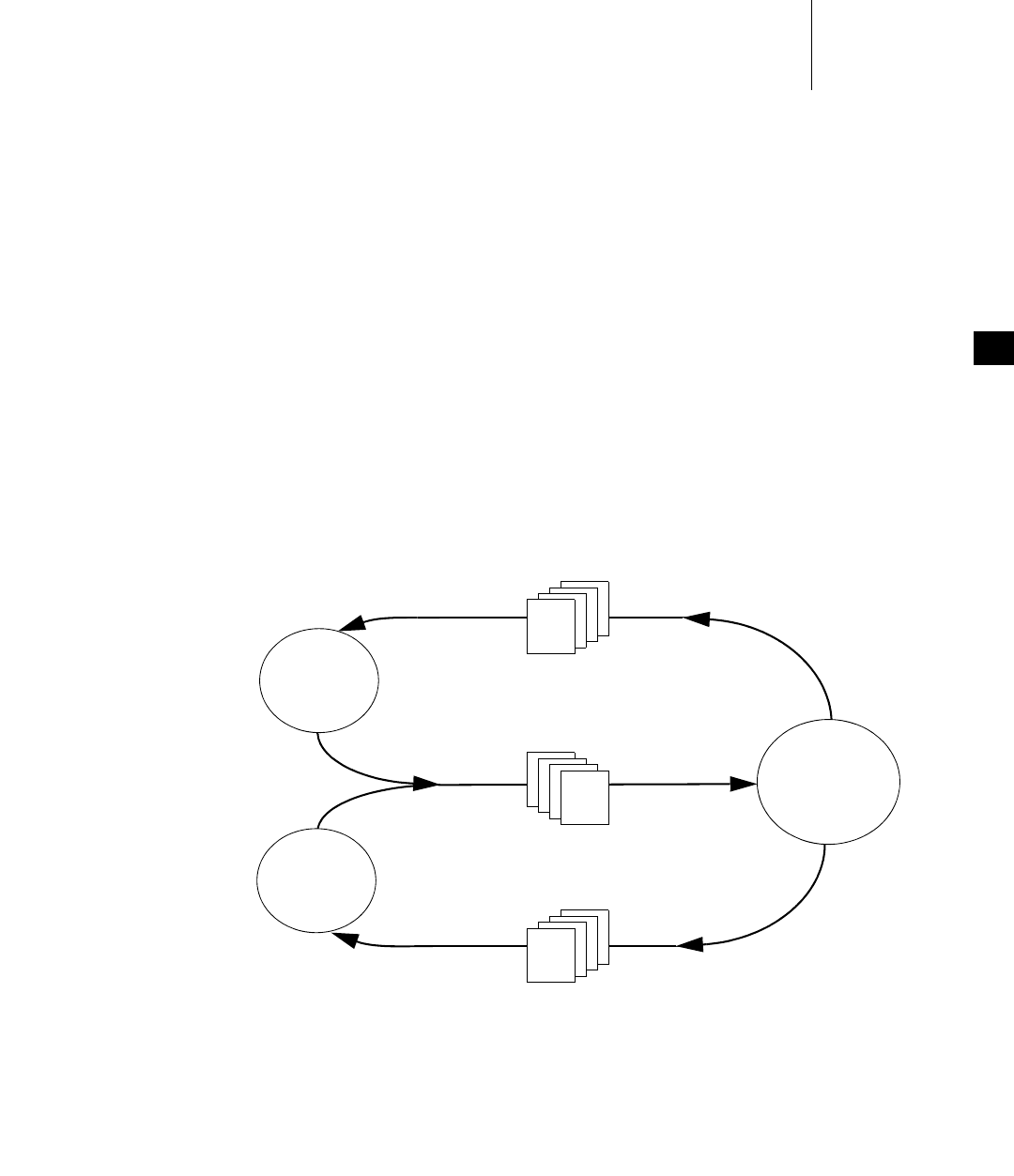

6.14.4 Servers and Clients with Message Queues ........................................... 167

6.14.5 Message Queues and VxWorks Events ................................................. 168

VxWorks

Application Programmer's Guide, 6.7

xii

6.15 Pipes .......................................................................................................................... 168

6.16 VxWorks Events ...................................................................................................... 169

6.16.1 Preparing a Task to Receive Events ....................................................... 170

6.16.2 Sending Events to a Task ........................................................................ 171

6.16.3 Accessing Event Flags .............................................................................. 173

6.16.4 Events Routines ........................................................................................ 173

6.16.5 Task Events Register ................................................................................ 174

6.16.6 Show Routines and Events ..................................................................... 174

6.17 Message Channels ................................................................................................. 175

6.18 Network Communication ..................................................................................... 175

6.19 Signals ..................................................................................................................... 176

6.19.1 Configuring VxWorks for Signals ......................................................... 178

6.19.2 Basic Signal Routines ............................................................................... 178

6.19.3 Queued Signal Routines ......................................................................... 179

6.19.4 Signal Events ............................................................................................. 184

6.19.5 Signal Handlers ........................................................................................ 185

6.20 Timers ....................................................................................................................... 188

6.20.1 Inter-Process Communication With Public Timers ............................. 188

7 POSIX Facilities .................................................................................... 189

7.1 Introduction ............................................................................................................. 191

7.2 Configuring VxWorks with POSIX Facilities ................................................... 192

7.2.1 POSIX PSE52 Support ............................................................................. 193

7.2.2 VxWorks Components for POSIX Facilities ......................................... 195

7.3 General POSIX Support ........................................................................................ 196

7.4 Standard C Library: libc ........................................................................................ 198

Contents

xiii

7.5 POSIX Header Files ............................................................................................... 199

7.6 POSIX Namespace .................................................................................................. 201

7.7 POSIX Process Privileges ..................................................................................... 203

7.8 POSIX Process Support ......................................................................................... 203

7.9 POSIX Clocks and Timers .................................................................................... 204

7.10 POSIX Asynchronous I/O ..................................................................................... 208

7.11 POSIX Advisory File Locking .............................................................................. 209

7.12 POSIX Page-Locking Interface ............................................................................ 209

7.13 POSIX Threads ........................................................................................................ 210

7.13.1 POSIX Thread Stack Guard Zones ........................................................ 211

7.13.2 POSIX Thread Attributes ........................................................................ 211

7.13.3 VxWorks-Specific Pthread Attributes ................................................... 212

7.13.4 Specifying Attributes when Creating Pthreads .................................. 213

7.13.5 POSIX Thread Creation and Management ........................................... 215

7.13.6 POSIX Thread Attribute Access ............................................................. 216

7.13.7 POSIX Thread Private Data .................................................................... 217

7.13.8 POSIX Thread Cancellation .................................................................... 218

7.14 POSIX Thread Mutexes and Condition Variables ........................................... 220

7.14.1 Thread Mutexes ........................................................................................ 220

Type Mutex Attribute .............................................................................. 221

Protocol Mutex Attribute ....................................................................... 222

Priority Ceiling Mutex Attribute ........................................................... 222

7.14.2 Condition Variables ................................................................................. 223

7.15 POSIX and VxWorks Scheduling ........................................................................ 225

7.15.1 Differences in POSIX and VxWorks Scheduling ................................. 226

7.15.2 POSIX and VxWorks Priority Numbering ........................................... 227

VxWorks

Application Programmer's Guide, 6.7

xiv

7.15.3 Default Scheduling Policy ....................................................................... 227

7.15.4 VxWorks Traditional Scheduler ............................................................. 228

7.15.5 POSIX Threads Scheduler ....................................................................... 229

7.15.6 POSIX Scheduling Routines .................................................................... 234

7.15.7 Getting Scheduling Parameters: Priority Limits and Time Slice ....... 234

7.16 POSIX Semaphores ................................................................................................ 235

7.16.1 Comparison of POSIX and VxWorks Semaphores .............................. 237

7.16.2 Using Unnamed Semaphores ................................................................. 237

7.16.3 Using Named Semaphores ..................................................................... 241

7.17 POSIX Message Queues ........................................................................................ 245

7.17.1 Comparison of POSIX and VxWorks Message Queues ...................... 246

7.17.2 POSIX Message Queue Attributes ......................................................... 247

7.17.3 Communicating Through a Message Queue ....................................... 249

7.17.4 Notification of Message Arrival ............................................................ 253

7.18 POSIX Signals ......................................................................................................... 259

7.19 POSIX Memory Management .............................................................................. 259

7.19.1 POSIX Memory Management APIs ....................................................... 259

7.19.2 Anonymous Memory Mapping ............................................................. 261

7.19.3 Shared Memory Objects .......................................................................... 263

7.19.4 Memory Mapped Files ............................................................................ 264

7.19.5 Memory Protection .................................................................................. 264

7.19.6 Memory Locking ...................................................................................... 265

7.20 POSIX Trace ............................................................................................................. 265

Trace Events, Streams, and Logs ............................................................ 265

Trace Operation ........................................................................................ 266

Trace APIs ................................................................................................. 267

Trace Code and Record Example ........................................................... 269

Contents

xv

8 Memory Management .......................................................................... 271

8.1 Introduction ............................................................................................................. 271

8.2 Configuring VxWorks With Memory Management ....................................... 272

8.3 Heap and Memory Partition Management ........................................................ 272

8.4 Memory Error Detection ....................................................................................... 274

8.4.1 Heap and Partition Memory Instrumentation ..................................... 275

8.4.2 Compiler Instrumentation ...................................................................... 281

9 I/O System ............................................................................................. 287

9.1 Introduction ............................................................................................................. 287

9.2 Configuring VxWorks With I/O Facilities ........................................................ 289

9.3 Files, Devices, and Drivers ................................................................................... 290

Filenames and the Default Device ......................................................... 290

9.4 Basic I/O ................................................................................................................... 292

9.4.1 File Descriptors ......................................................................................... 292

File Descriptor Table ................................................................................ 293

9.4.2 Standard Input, Standard Output, and Standard Error ..................... 293

9.4.3 Standard I/O Redirection ....................................................................... 294

9.4.4 Open and Close ........................................................................................ 296

9.4.5 Create and Remove .................................................................................. 298

9.4.6 Read and Write ......................................................................................... 299

9.4.7 File Truncation .......................................................................................... 300

9.4.8 I/O Control ............................................................................................... 300

9.4.9 Pending on Multiple File Descriptors with select( ) ............................ 301

9.4.10 POSIX File System Routines ................................................................... 302

VxWorks

Application Programmer's Guide, 6.7

xvi

9.5 Buffered I/O: stdio .................................................................................................. 303

9.5.1 Using stdio ................................................................................................ 303

9.5.2 Standard Input, Standard Output, and Standard Error ..................... 304

9.6 Other Formatted I/O ............................................................................................... 305

9.7 Asynchronous Input/Output ................................................................................ 305

9.7.1 The POSIX AIO Routines ........................................................................ 305

9.7.2 AIO Control Block .................................................................................... 306

9.7.3 Using AIO .................................................................................................. 307

Alternatives for Testing AIO Completion ............................................ 308

9.8 Devices in VxWorks ............................................................................................... 308

9.8.1 Serial I/O Devices: Terminal and Pseudo-Terminal Devices ............ 309

tty Options ................................................................................................. 309

Raw Mode and Line Mode ..................................................................... 310

tty Special Characters .............................................................................. 311

9.8.2 Pipe Devices .............................................................................................. 312

Creating Pipes ........................................................................................... 312

I/O Control Functions ............................................................................. 313

9.8.3 Pseudo I/O Device ................................................................................... 313

I/O Control Functions ............................................................................. 313

9.8.4 Network File System (NFS) Devices ...................................................... 314

I/O Control Functions for NFS Clients ................................................. 314

9.8.5 Non-NFS Network Devices .................................................................... 315

I/O Control Functions ............................................................................. 316

9.8.6 Null Devices ............................................................................................. 316

9.8.7 Sockets ........................................................................................................ 316

9.8.8 Transaction-Based Reliable File System Facility: TRFS ...................... 317

Configuring VxWorks With TRFS ......................................................... 317

Automatic Instantiation of TRFS ............................................................ 318

Using TRFS in Applications .................................................................... 318

TRFS Code Example ............................................................................... 319

Contents

xvii

10 Local File Systems ............................................................................... 321

10.1 Introduction ............................................................................................................. 322

10.2 File System Monitor .............................................................................................. 325

10.3 Virtual Root File System: VRFS .......................................................................... 325

10.4 Highly Reliable File System: HRFS .................................................................... 327

10.4.1 Configuring VxWorks for HRFS ............................................................ 327

10.4.2 Configuring HRFS ................................................................................... 328

10.4.3 HRFS and POSIX PSE52 .......................................................................... 329

10.4.4 Creating an HRFS File System .............................................................. 330

10.4.5 Transactional Operations and Commit Policies ................................ 330

10.4.6 Configuring Transaction Points at Runtime ....................................... 332

10.4.7 File Access Time Stamps ......................................................................... 333

10.4.8 Maximum Number of Files and Directories ........................................ 334

10.4.9 Working with Directories ....................................................................... 334

Creating Subdirectories ........................................................................... 334

Removing Subdirectories ........................................................................ 334

Reading Directory Entries ....................................................................... 335

10.4.10 Working with Files ................................................................................... 335

File I/O Routines ...................................................................................... 335

File Linking and Unlinking ..................................................................... 335

File Permissions ........................................................................................ 336

10.4.11 I/O Control Functions Supported by HRFS ........................................ 336

10.4.12 Crash Recovery and Volume Consistency ........................................... 337

10.4.13 File Management and Full Devices ....................................................... 337

10.5 MS-DOS-Compatible File System: dosFs ......................................................... 339

10.5.1 Configuring VxWorks for dosFs ............................................................ 339

10.5.2 Configuring dosFs ................................................................................... 341

10.5.3 Creating a dosFs File System .................................................................. 342

VxWorks

Application Programmer's Guide, 6.7

xviii

10.5.4 Working with Volumes and Disks ......................................................... 342

Accessing Volume Configuration Information .................................... 342

Synchronizing Volumes .......................................................................... 343

10.5.5 Working with Directories ........................................................................ 343

Creating Subdirectories ........................................................................... 343

Removing Subdirectories ........................................................................ 343

Reading Directory Entries ....................................................................... 344

10.5.6 Working with Files ................................................................................... 344

File I/O Routines ...................................................................................... 344

File Attributes ........................................................................................... 344

10.5.7 Disk Space Allocation Options ............................................................... 347

Choosing an Allocation Method ............................................................ 347

Using Cluster Group Allocation ............................................................ 348

Using Absolutely Contiguous Allocation ............................................. 348

10.5.8 Crash Recovery and Volume Consistency ........................................... 350

10.5.9 I/O Control Functions Supported by dosFsLib ................................... 350

10.5.10 Booting from a Local dosFs File System Using SCSI .......................... 352

10.6 Raw File System: rawFs ......................................................................................... 352

10.6.1 Configuring VxWorks for rawFs ........................................................... 353

10.6.2 Creating a rawFs File System ................................................................. 353

10.6.3 Mounting rawFs Volumes ...................................................................... 353

10.6.4 rawFs File I/O ........................................................................................... 354

10.6.5 I/O Control Functions Supported by rawFsLib .................................. 354

10.7 CD-ROM File System: cdromFs .......................................................................... 355

10.7.1 Configuring VxWorks for cdromFs ....................................................... 357

10.7.2 Creating and Using cdromFs .................................................................. 357

10.7.3 I/O Control Functions Supported by cdromFsLib ............................. 357

10.7.4 Version Numbers ..................................................................................... 358

10.8 Read-Only Memory File System: ROMFS ........................................................ 359

10.8.1 Configuring VxWorks with ROMFS ..................................................... 359

Contents

xix

10.8.2 Building a System With ROMFS and Files ........................................... 359

10.8.3 Accessing Files in ROMFS ...................................................................... 360

10.8.4 Using ROMFS to Start Applications Automatically ........................... 361

10.9 Target Server File System: TSFS ......................................................................... 361

Socket Support .......................................................................................... 362

Error Handling ......................................................................................... 362

Configuring VxWorks for TSFS Use ...................................................... 363

Security Considerations .......................................................................... 363

Using the TSFS to Boot a Target ............................................................. 364

11 Error Detection and Reporting ............................................................ 365

11.1 Introduction ............................................................................................................. 365

11.2 Configuring Error Detection and Reporting Facilities ................................... 366

11.2.1 Configuring VxWorks ............................................................................. 366

11.2.2 Configuring the Persistent Memory Region ........................................ 367

11.2.3 Configuring Responses to Fatal Errors ................................................. 368

11.3 Error Records ........................................................................................................... 368

11.4 Displaying and Clearing Error Records ............................................................. 370

11.5 Fatal Error Handling Options .............................................................................. 371

11.5.1 Configuring VxWorks with Error Handling Options ......................... 372

11.5.2 Setting the System Debug Flag ............................................................... 373

Setting the Debug Flag Statically ........................................................... 373

Setting the Debug Flag Interactively ..................................................... 373

11.6 Other Error Handling Options for Processes ................................................... 374

11.7 Using Error Reporting APIs in Application Code ........................................... 374

11.8 Sample Error Record .............................................................................................. 375

VxWorks

Application Programmer's Guide, 6.7

xx

A Kernel to RTP Application Migration ................................................. 377

A.1 Introduction ............................................................................................................ 377

A.2 Migrating Kernel Applications to Processes ..................................................... 377

A.2.1 Reducing Library Size ............................................................................. 378

A.2.2 Limiting Process Scope ............................................................................ 378

Communicating Between Applications ................................................ 378

Communicating Between an Application and the Kernel ................ 379

A.2.3 Using C++ Initialization and Finalization Code .................................. 379

A.2.4 Eliminating Hardware Access ................................................................ 380

A.2.5 Eliminating Interrupt Contexts In Processes ........................................ 381

POSIX Signals ........................................................................................... 381

Watchdogs ................................................................................................. 381

Drivers ....................................................................................................... 382

A.2.6 Redirecting I/O ........................................................................................ 382

A.2.7 Process and Task API Differences ......................................................... 384

Task Naming ............................................................................................. 384

Differences in Scope Between Kernel and User Modes ...................... 384

Task Locking and Unlocking .................................................................. 385

Private and Public Objects ...................................................................... 385

A.2.8 Semaphore Differences ............................................................................ 386

A.2.9 POSIX Signal Differences ........................................................................ 386

Signal Generation ..................................................................................... 386

Signal Delivery ......................................................................................... 387

Scope Of Signal Handlers ....................................................................... 387

Default Handling Of Signals .................................................................. 387

Default Signal Mask for New Tasks ...................................................... 388

Signals Sent to Blocked Tasks ................................................................. 388

Signal API Behavior ................................................................................. 388

A.2.10 Networking Issues .................................................................................. 389

Socket APIs ................................................................................................ 389

routeAdd( ) ................................................................................................ 389

A.2.11 Header File Differences ........................................................................... 389

Contents

xxi

A.3 Differences in Kernel and RTP APIs .................................................................. 390

A.3.1 APIs Not Present in User Mode ............................................................. 390

A.3.2 APIs Added for User Mode Only .......................................................... 391

A.3.3 APIs that Work Differently in Processes .............................................. 391

A.3.4 ANSI and POSIX API Differences ......................................................... 392

A.3.5 Kernel Calls Require Kernel Facilities ................................................... 392

A.3.6 Other API Differences ............................................................................. 393

Index .............................................................................................................. 395

VxWorks

Application Programmer's Guide, 6.7

xxii

1

1

Overview

1.1 Introduction 1

1.2 Related Documentation Resources 2

1.3 VxWorks Configuration and Build 3

1.1 Introduction

This guide describes the VxWorks operating system, and how to use VxWorks

facilities in the development of real-time systems and applications. It covers the

following topics:

■real-time processes (RTPs)

■RTP applications

■Static Libraries, Shared Libraries, and Plug-Ins

■C++ development

■multitasking facilities

■POSIX facilities

■memory management

■I/O system

■local file systems

■error detection and reporting

VxWorks

Application Programmer's Guide, 6.7

2

1.2 Related Documentation Resources

The companion volume to this book, the VxWorks Kernel Programmer’s Guide,

provides material specific to kernel features and kernel-based development.

Detailed information about VxWorks libraries and routines is provided in the

VxWorks API references. Information specific to target architectures is provided in

the VxWorks BSP references and in the VxWorks Architecture Supplement.

For information about BSP and driver development, see the VxWorks BSP

Developer’s Guide and the VxWorks Device Driver Guide.

The VxWorks networking facilities are documented in the Wind River Network

Stack Programmer’s Guide and the VxWorks PPP Programmer’s Guide.

For information about migrating applications, BSPs, drivers, and projects from

previous versions of VxWorks and the host development environment, see the

VxWorks Migration Guide and the Wind River Workbench Migration Guide.

The Wind River IDE and command-line tools are documented in the Wind River

Workbench by Example guide, the VxWorks Command-Line Tools User’s Guide, the

Wind River compiler and GNU compiler guides, and the Wind River tools API and

command-line references.

NOTE: This book provides information about facilities available for real-time

processes. For information about facilities available in the VxWorks kernel, see the

the VxWorks Kernel Programmer’s Guide.

1 Overview

1.3 VxWorks Configuration and Build

3

1

1.3 VxWorks Configuration and Build

This document describes VxWorks features; it does not go into detail about the

mechanisms by which VxWorks-based systems and applications are configured

and built. The tools and procedures used for configuration and build are described

in the Wind River Workbench by Example guide and the VxWorks Command-Line Tools

User’s Guide.

NOTE: In this guide, as well as in the VxWorks API references, VxWorks

components and their configuration parameters are identified by the names used

in component description files. The names take the form, for example, of

INCLUDE_FOO and NUM_FOO_FILES (for components and parameters,

respectively).

You can use these names directly to configure VxWorks using the command-line

configuration facilities.

Wind River Workbench displays descriptions of components and parameters, as

well as their names, in the Components tab of the Kernel Configuration Editor.

You can use the Find dialog to locate a component or parameter using its name or

description. To access the Find dialog from the Components tab, type CTRL+F, or

right-click and select Find.

VxWorks

Application Programmer's Guide, 6.7

4

VxWorks

Application Programmer's Guide, 6.7

6

2.1 Introduction

VxWorks real-time processes (RTPs) are in many respects similar to processes in

other operating systems—such as UNIX and Linux—including extensive POSIX

compliance.1 The ways in which they are created, execute applications, and

terminate will be familiar to developers who understand the UNIX process model.

The VxWorks process model is, however, designed for use with real-time

embedded systems. The features that support this model include system-wide

scheduling of tasks (processes themselves are not scheduled), preemption of

processes in kernel mode as well as user mode, process-creation in two steps to

separate loading from instantiation, and loading applications in their entirety.

VxWorks real-time processes provide the means for executing applications in user

mode. Each process has its own address space, which contains the executable

program, the program’s data, stacks for each task, the heap, and resources

associated with the management of the process itself (such as memory-allocation

tracking). Many processes may be present in memory at once, and each process

may contain more than one task (sometimes known as a thread in other operating

systems).

VxWorks processes can operate with two different virtual memory models: flat

(the default) or overlapped (optional). With the flat virtual-memory model each

VxWorks process has its own region of virtual memory described by a unique

range of addresses. This model provides advantages in execution speed, in a

programming model that accommodates systems with and without an MMU, and

in debugging applications. With overlapped virtual-memory model, each

VxWorks process uses the same range of virtual addresses for the area in which its

code (text, data, and bss segments) resides. This model provides more precise

control over the virtual memory space and allows for notably faster application

load time.

For information about developing RTP applications, see 3. RTP Applications.

1. VxWorks can be configured to provide POSIX PSE52 support for individual processes.

2 Real-Time Processes

2.2 About Real-time Processes

7

2

2.2 About Real-time Processes

A common definition of a process is “a program in execution,” and VxWorks

processes are no different in this respect. In fact, the life-cycle of VxWorks real-time

processes is largely consistent with the POSIX process model (see 2.2.9 RTPs and

POSIX, p.16).

VxWorks processes, however, are called real-time processes (RTPs) precisely

because they are designed to support the determinism required of real-time

systems. They do so in the following ways:

■The VxWorks task-scheduling model is maintained. Processes are not

scheduled—tasks are scheduled globally throughout the system.

■Processes can be preempted in kernel mode as well as in user mode. Every task

has both a user mode and a kernel mode stack. (The VxWorks kernel is fully

preemptive.)

■Processes are created without the overhead of performing a copy of the

address space for the new process and then performing an exec operation to

load the file. With VxWorks, a new address space is simply created and the file

loaded.

■Process creation takes place in two phases that clearly separate instantiation of

the process from loading and executing the application. The first phase is

performed in the context of the task that calls rtpSpawn( ). The second phase

is carried out by a separate task that bears the cost of loading the application

text and data before executing it, and which operates at its own priority level

distinct from the parent task. The parent task, which called rtpSpawn( ), is not

impacted and does not have wait for the application to begin execution, unless

it has been coded to wait.

■Processes load applications in their entirety—there is no demand paging.

All of these differences are designed to make VxWorks particularly suitable for

hard real-time applications by ensuring determinism, as well as providing a

common programming model for systems that run with an MMU and those that

do not. As a result, there are differences between the VxWorks process model and

that of server-style operating systems such as UNIX and Linux. The reasons for

these differences are discussed as the relevant topic arises throughout this chapter.

VxWorks

Application Programmer's Guide, 6.7

8

2.2.1 RTPs and Scheduling

The primary way in which VxWorks processes support determinism is that they

themselves are simply not scheduled. Only tasks are scheduled in VxWorks

systems, using a priority-based, preemptive policy. Based on the strong

preemptibility of the VxWorks kernel, this ensures that at any given time, the

highest priority task in the system that is ready to run will execute, regardless of

whether the task is in the kernel or in any process in the system.

By way of contrast, the scheduling policy for non-real-time systems is based on

time-sharing, as well as a dynamic determination of process priority that ensures

that no process is denied use of the CPU for too long, and that no process

monopolizes the CPU.

VxWorks does provide an optional time-sharing capability—round-robin

scheduling—but it does not interfere with priority-based preemption, and is

therefore deterministic. VxWorks round-robin scheduling simply ensures that

when there is more than one task with the highest priority ready to run at the same

time, the CPU is shared between those tasks. No one of them, therefore, can usurp

the processor until it is blocked.

For more information about VxWorks scheduling see 6.3 Task Scheduling, p.115.

2.2.2 RTP Creation

The manner in which real-time processes are created supports the determinism

required of real-time systems. The creation of an RTP takes place in two distinct

phases, and the executable is loaded in its entirety when the process is created. In

the first phase, the rtpSpawn( ) call creates the process object in the system,

allocates virtual and physical memory to it, and creates the initial process task (see

2.2.5 RTPs and Tasks, p.11). In the second phase, the initial process task loads the

entire executable and starts the main routine.

This approach provides for system determinism in two ways:

■First, the work of process creation is divided between the rtpSpawn( ) task and

the initial process task—each of which has its own distinct task priority. This

means that the activity of loading applications does not occur at the priority,

or with the CPU time, of the task requesting the creation of the new process.

Therefore, the initial phase of starting a process is discrete and deterministic,

regardless of the application that is going to run in it. And for the second

phase, the developer can assign the task priority appropriate to the

significance of the application, or to take into account necessarily

2 Real-Time Processes

2.2 About Real-time Processes

9

2

indeterministic constraints on loading the application (for example, if the

application is loaded from networked host system, or local disk). The

application is loaded with the same task priority as the priority with which it

will run. In a way, this model is analogous to asynchronous I/O, as the task

that calls rtpSpawn( ) just initiates starting the process and can concurrently

perform other activities while the application is being loaded and started.

■Second, the entire application executable is loaded when the process is created,

which means that the determinacy of its execution is not compromised by

incremental loading during execution. This feature is obviously useful when

systems are configured to start applications automatically at boot time—all

executables are fully loaded and ready to execute when the system comes up.

The rtpSpawn( ) routine has an option that provides for synchronizing for the

successful loading and instantiation of the new process.

At startup time, the resources internally required for the process (such as the heap)

are allocated on demand. The application's text is guaranteed to be

write-protected, and the application's data readable and writable, as long as an

MMU is present and the operating system is configured to manage it. While

memory protection is provided by MMU-enforced partitions between processes,

there is no mechanism to provide resource protection by limiting memory usage

of processes to a specified amount. For more information, see 8. Memory

Management.

Note that creation of VxWorks processes involves no copying or sharing of the

parent processes’ page frames (copy-on-write), as is the case with some versions of

UNIX and Linux. The flat virtual memory model provided by VxWorks prohibits

this approach and the overlapped virtual memory model does not currently

support this feature. For information about the issue of inheritance of attributes

from parent processes, see 2.2.7 RTPs, Inheritance, Zombies, and Resource

Reclamation, p.13.

For information about what operations are possible on a process in each phase of

its instantiation, see the VxWorks API reference for rtpLib. Also see 3.3.7 Using

Hook Routines, p.50.

VxWorks processes can be started in the following ways:

■interactively from the kernel shell

■interactively from the host shell and debugger

■automatically at boot time, using a startup facility

■programmatically from applications or the kernel

VxWorks

Application Programmer's Guide, 6.7

10

Form more information in this regard, see 3.6 Executing RTP Applications, p.54.

2.2.3 RTP Termination

Processes are terminated under the following circumstances:

■When the last task in the process exits.

■If any task in the process calls exit( ), regardless of whether or not other tasks

are running in the process.

■If the process’ main( ) routine returns.

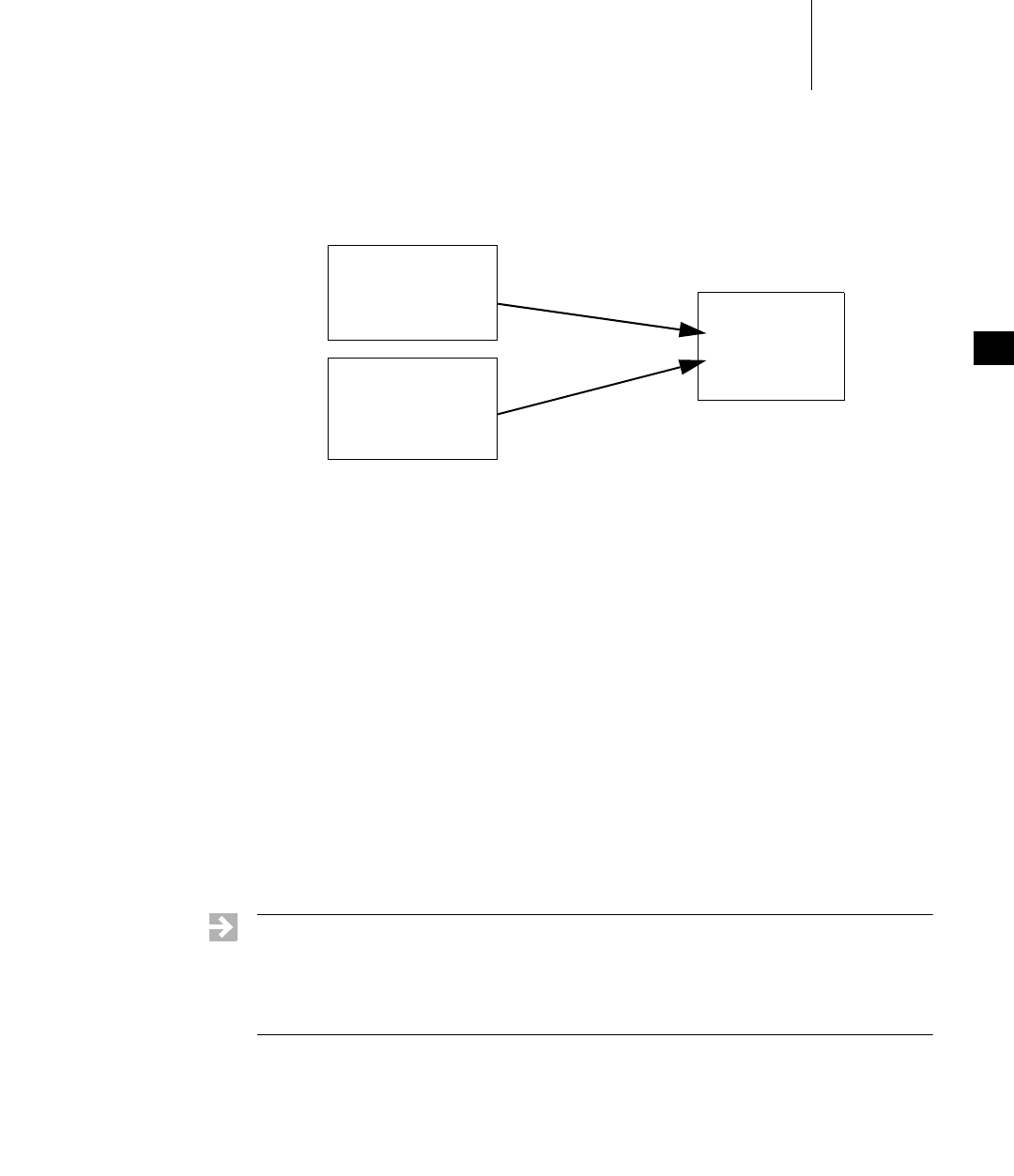

This is because exit( ) is called implicitly when main( ) returns. An application