A Beginner's Guide To Structural Equation Ing Beginners 3rd Ed

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 510 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- A Beginner’s Guide to Structural Equation Modeling

- Copyright

- Contents

- About the Authors

- Preface

- 1 Introduction

- 2 Data Entry and Data Editing Issues

- 3 Correlation

- 4 SEM Basics

- 5 Model Fit

- 6 Regression Models

- 7 Path Models

- 8 Confirmatory Factor Models

- 9 Developing Structural Equation Models: Part I

- 10 Developing Structural Equation Models: Part II

- 11 Reporting SEM Research: Guidelines and Recommendations

- 12 Model Validation

- 13 Multiple Sample, Multiple Group, and Structured Means Models

- 14 Second-Order, Dynamic, and Multitrait Multimethod Models

- 15 Multiple Indicator–Multiple Indicator Cause, Mixture, and Multilevel Models

- 16 Interaction, Latent Growth, and Monte Carlo Methods

- 17 Matrix Approach to Structural Equation Modeling

- Appendix A: Introduction to Matrix Operations

- Appendix B: Statistical Tables

- Answers to Selected Exercises

- Author Index

- Subject Index

A Beginner’s Guide to

Structural

Equation

Randall E. Schumacker

The University of Alabama

Richard G. Lomax

The Ohio State University

Modeling

Third Edition

Y102005.indb 3 4/3/10 4:25:16 PM

Routledge

Taylor & Francis Group

711 Third Avenue

New York, NY 10017

Routledge

Taylor & Francis Group

27 Church Road

Hove, East Sussex BN3 2FA

© 2010 by Taylor and Francis Group, LLC

Routledge is an imprint of Taylor & Francis Group, an Informa business

International Standard Book Number: 978-1-84169-890-8 (Hardback) 978-1-84169-891-5 (Paperback)

For permission to photocopy or use material electronically from this work, please access www.

copyright.com (http://www.copyright.com/) or contact the Copyright Clearance Center, Inc.

(CCC), 222 Rosewood Drive, Danvers, MA 01923, 978-750-8400. CCC is a not-for-profit organiza-

tion that provides licenses and registration for a variety of users. For organizations that have been

granted a photocopy license by the CCC, a separate system of payment has been arranged.

Trademark Notice: Product or corporate names may be trademarks or registered trademarks, and

are used only for identification and explanation without intent to infringe.

Library of Congress Cataloging-in-Publication Data

Schumacker, Randall E.

A beginner’s guide to structural equation modeling / authors, Randall E.

Schumacker, Richard G. Lomax.-- 3rd ed.

p. cm.

Includes bibliographical references and index.

ISBN 978-1-84169-890-8 (hardcover : alk. paper) -- ISBN 978-1-84169-891-5

(pbk. : alk. paper)

1. Structural equation modeling. 2. Social sciences--Statistical methods. I.

Lomax, Richard G. II. Title.

QA278.S36 2010

519.5’3--dc22 2010009456

Visit the Taylor & Francis Web site at

http://www.taylorandfrancis.com

and the Psychology Press Web site at

http://www.psypress.com

Y102005.indb 4 4/3/10 4:25:16 PM

vii

Contents

About the Authors ...........................................................................................xv

Preface ............................................................................................................. xvii

1 Introduction ................................................................................................1

1.1 What Is Structural Equation Modeling? .......................................2

1.2 History of Structural Equation Modeling .................................... 4

1.3 Why Conduct Structural Equation Modeling? ............................ 6

1.4 Structural Equation Modeling Software Programs ....................8

1.5 Summary ......................................................................................... 10

References .................................................................................................. 11

2 Data Entry and Data Editing Issues ..................................................... 13

2.1 Data Entry ....................................................................................... 14

2.2 Data Editing Issues ........................................................................ 18

2.2.1 Measurement Scale ........................................................... 18

2.2.2 Restriction of Range ......................................................... 19

2.2.3 Missing Data ...................................................................... 20

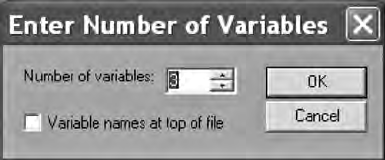

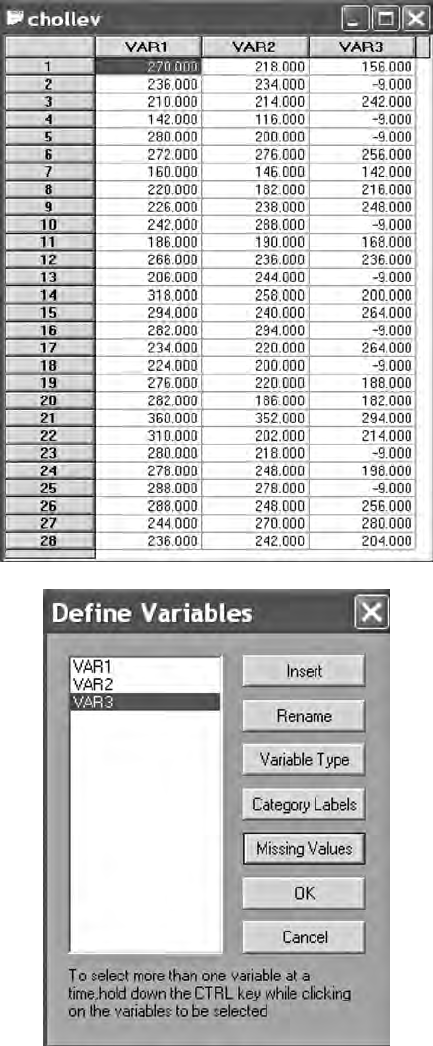

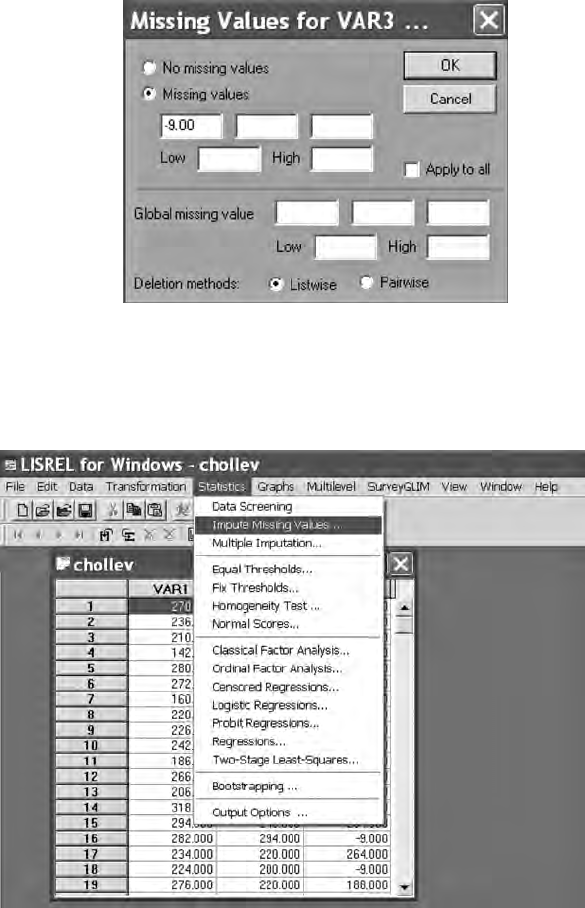

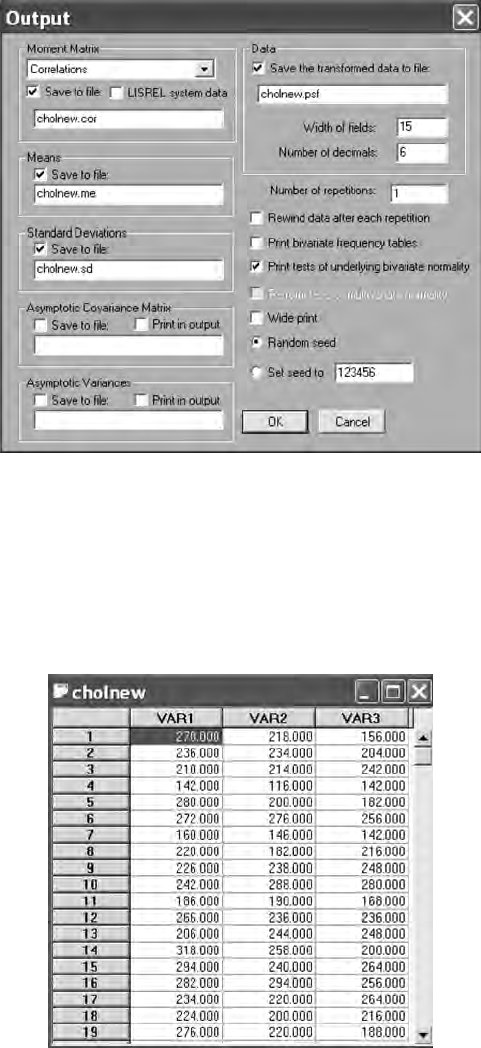

2.2.4 LISREL–PRELIS Missing Data Example........................ 21

2.2.5 Outliers ............................................................................... 27

2.2.6 Linearity ............................................................................. 27

2.2.7 Nonnormality .................................................................... 28

2.3 Summary ......................................................................................... 29

References .................................................................................................. 31

3 Correlation ................................................................................................33

3.1 Types of Correlation Coefcients .................................................33

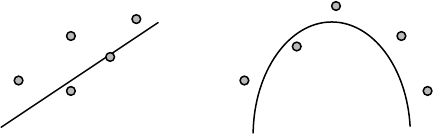

3.2 Factors Affecting Correlation Coefcients ................................. 35

3.2.1 Level of Measurement and Range of Values ................. 35

3.2.2 Nonlinearity ...................................................................... 36

3.2.3 Missing Data ......................................................................38

3.2.4 Outliers ............................................................................... 39

3.2.5 Correction for Attenuation .............................................. 39

3.2.6 Nonpositive Denite Matrices ........................................ 40

3.2.7 Sample Size ........................................................................ 41

3.3 Bivariate, Part, and Partial Correlations .....................................42

3.4 Correlation versus Covariance .....................................................46

3.5 Variable Metrics (Standardized versus Unstandardized) ........ 47

3.6 Causation Assumptions and Limitations ...................................48

3.7 Summary ......................................................................................... 49

References .................................................................................................. 51

Y102005.indb 7 4/3/10 4:25:17 PM

viii Contents

4 SEM Basics ................................................................................................ 55

4.1 Model Specication ........................................................................55

4.2 Model Identication ....................................................................... 56

4.3 Model Estimation ........................................................................... 59

4.4 Model Testing ................................................................................. 63

4.5 Model Modication ....................................................................... 64

4.6 Summary ......................................................................................... 67

References .................................................................................................. 69

5 Model Fit .................................................................................................... 73

5.1 Types of Model-Fit Criteria ........................................................... 74

5.1.1 LISREL–SIMPLIS Example ..............................................77

5.1.1.1 Data .....................................................................77

5.1.1.2 Program ..............................................................80

5.1.1.3 Output ................................................................. 81

5.2 Model Fit ..........................................................................................85

5.2.1 Chi-Square (χ2) .................................................................. 85

5.2.2 Goodness-of-Fit Index (GFI) and Adjusted

Goodness-of-Fit Index (AGFI) .........................................86

5.2.3 Root-Mean-Square Residual Index (RMR) .................... 87

5.3 Model Comparison ........................................................................ 88

5.3.1 Tucker–Lewis Index (TLI) ................................................ 88

5.3.2 Normed Fit Index (NFI) and Comparative Fit

Index (CFI) .........................................................................88

5.4 Model Parsimony ........................................................................... 89

5.4.1 Parsimony Normed Fit Index (PNFI) ............................. 90

5.4.2 Akaike Information Criterion (AIC) .............................. 90

5.4.3 Summary ............................................................................ 91

5.5 Parameter Fit ................................................................................... 92

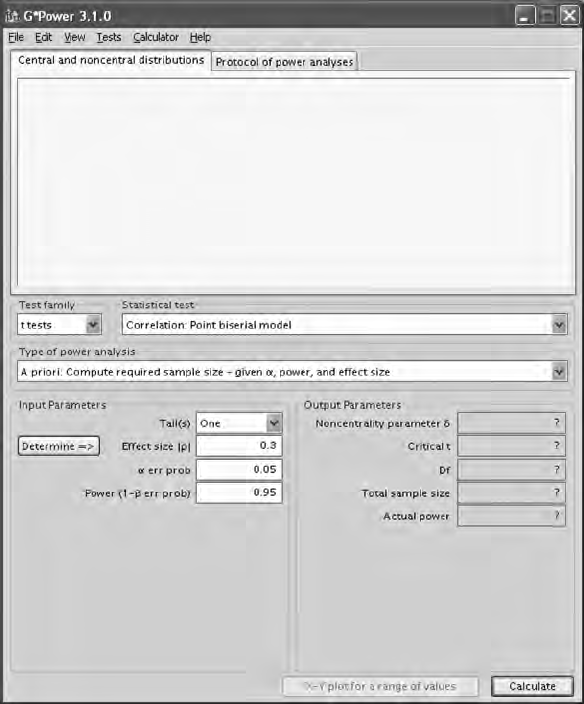

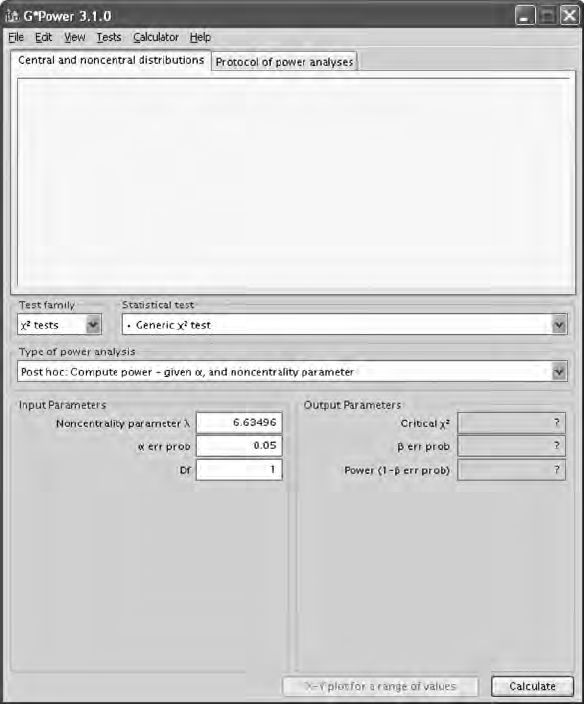

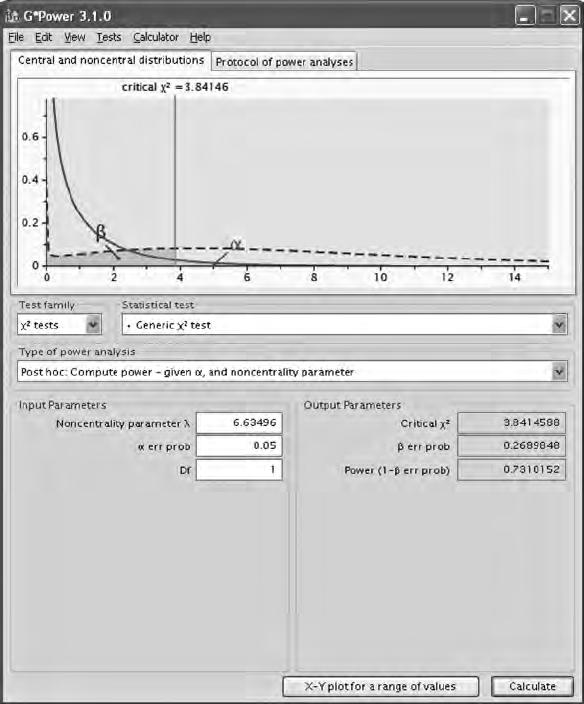

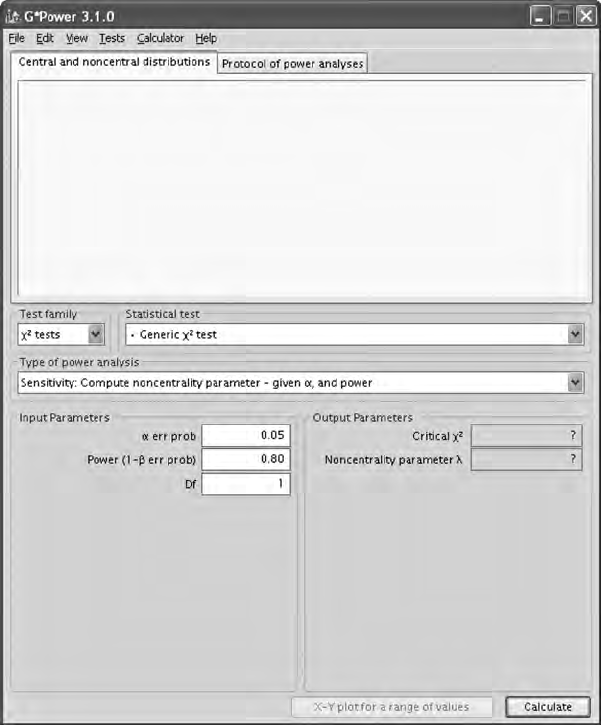

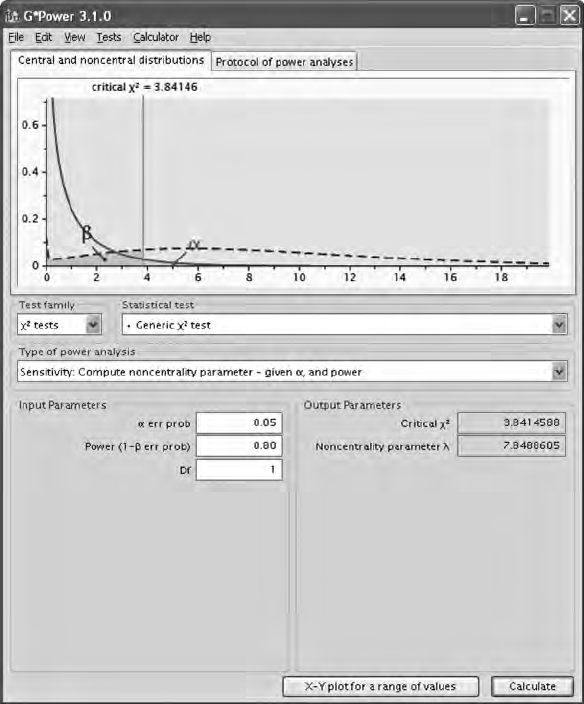

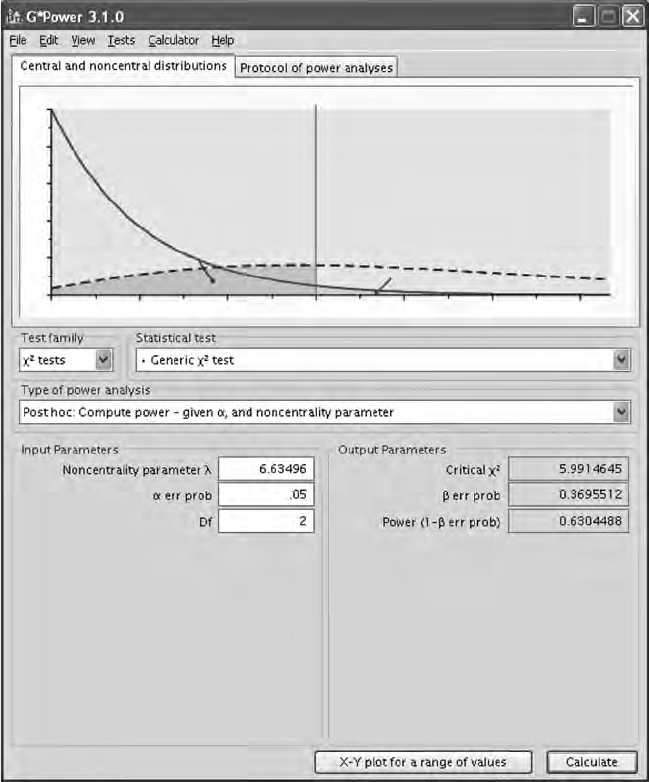

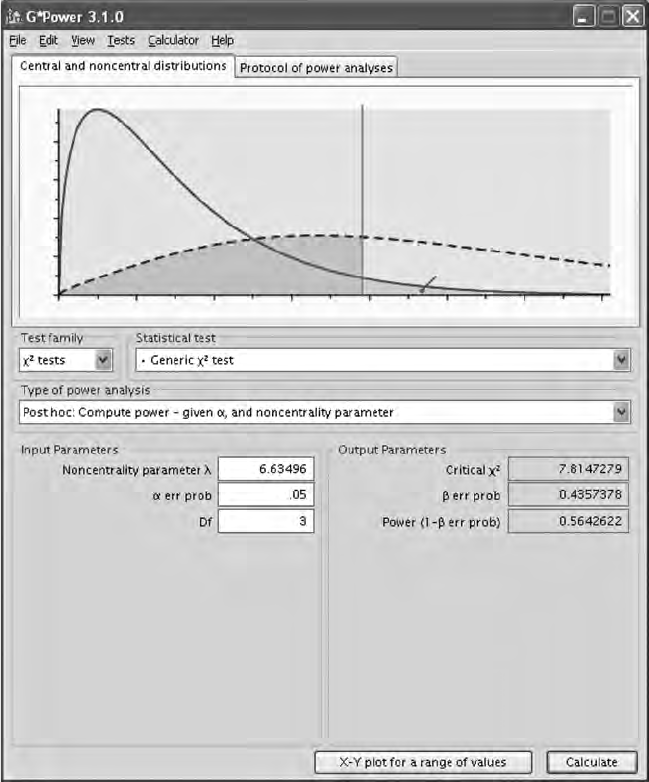

5.6 Power and Sample Size ................................................................. 93

5.6.1 Model Fit ............................................................................ 94

5.6.1.1 Power ................................................................... 94

5.6.1.2 Sample Size ........................................................99

5.6.2 Model Comparison ......................................................... 108

5.6.3 Parameter Signicance ....................................................111

5.6.4 Summary ...........................................................................113

5.7 Two-Step Versus Four-Step Approach to Modeling ................114

5.8 Summary ........................................................................................116

Chapter Footnote .....................................................................................118

Standard Errors ........................................................................................118

Chi-Squares ...............................................................................................118

References ................................................................................................ 120

Y102005.indb 8 4/3/10 4:25:17 PM

Contents ix

6 Regression Models ................................................................................ 125

6.1 Overview ....................................................................................... 126

6.2 An Example ................................................................................... 130

6.3 Model Specication ...................................................................... 130

6.4 Model Identication ..................................................................... 131

6.5 Model Estimation ......................................................................... 131

6.6 Model Testing ............................................................................... 133

6.7 Model Modication ..................................................................... 134

6.8 Summary ....................................................................................... 135

6.8.1 Measurement Error ......................................................... 136

6.8.2 Additive Equation ........................................................... 137

Chapter Footnote .................................................................................... 138

Regression Model with Intercept Term ..................................... 138

LISREL–SIMPLIS Program (Intercept Term) ...................................... 138

References ................................................................................................ 139

7 Path Models ............................................................................................ 143

7.1 An Example ................................................................................... 144

7.2 Model Specication ...................................................................... 147

7.3 Model Identication ..................................................................... 150

7.4 Model Estimation ......................................................................... 151

7.5 Model Testing ............................................................................... 154

7.6 Model Modication ..................................................................... 155

7.7 Summary ....................................................................................... 156

Appendix: LISREL–SIMPLIS Path Model Program ........................... 156

Chapter Footnote .................................................................................... 158

Another Traditional Non-SEM Path Model-Fit Index ............ 158

LISREL–SIMPLIS program ......................................................... 158

References .................................................................................................161

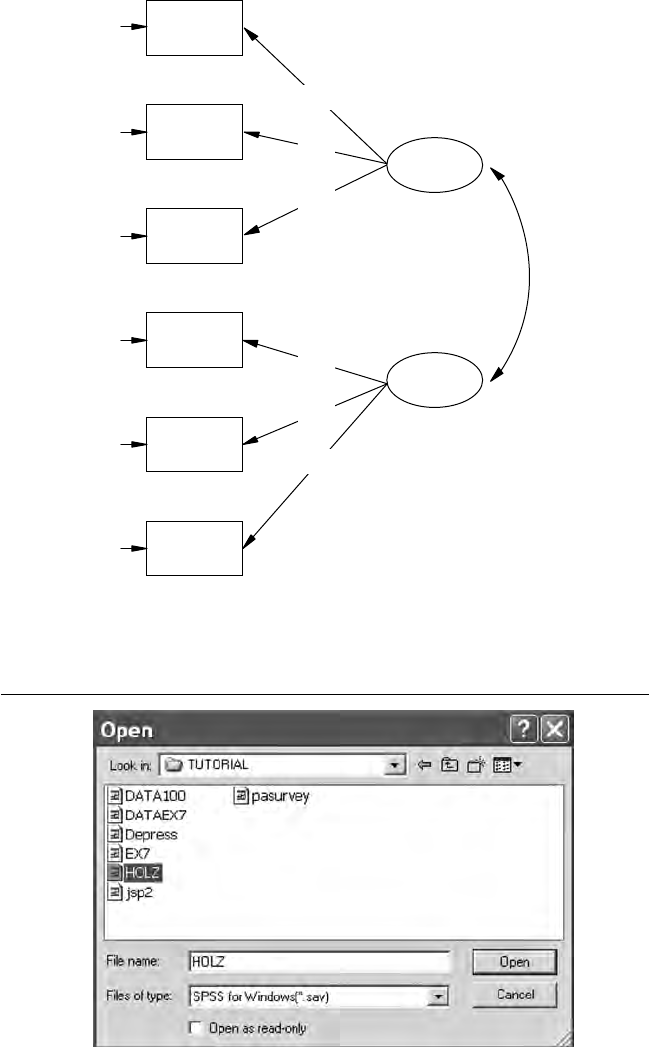

8 Conrmatory Factor Models ............................................................... 163

8.1 An Example ................................................................................... 164

8.2 Model Specication ...................................................................... 166

8.3 Model Identication ......................................................................167

8.4 Model Estimation ......................................................................... 169

8.5 Model Testing ............................................................................... 170

8.6 Model Modication ..................................................................... 173

8.7 Summary ........................................................................................174

Appendix: LISREL–SIMPLIS Conrmatory Factor Model Program ....174

References ................................................................................................ 177

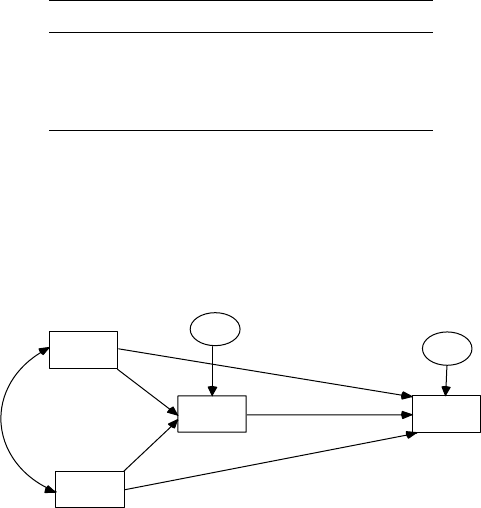

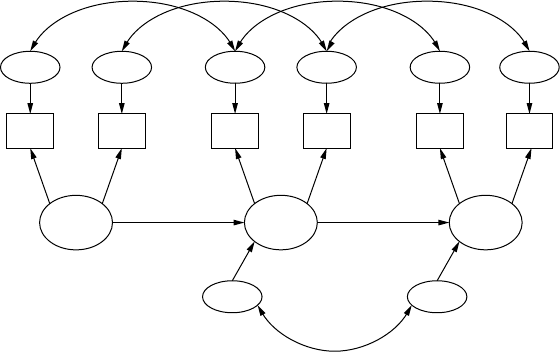

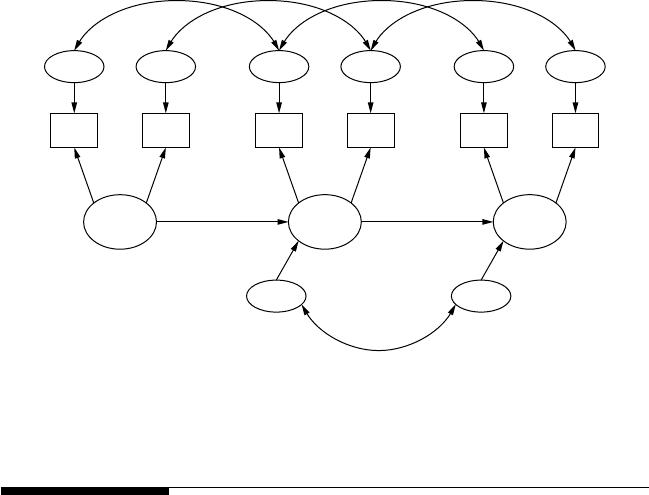

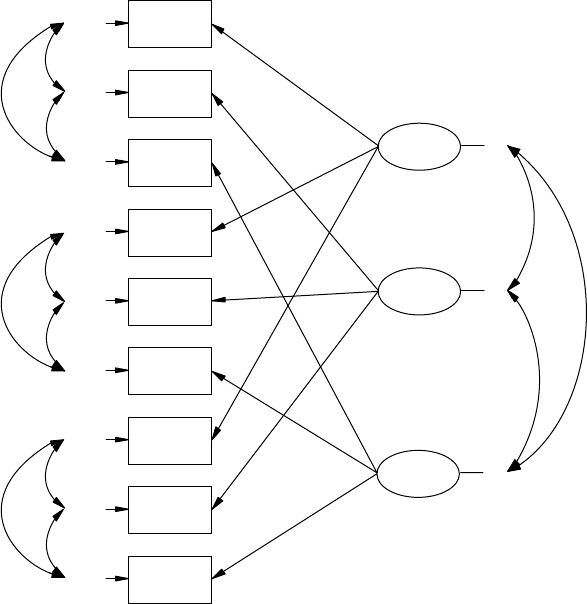

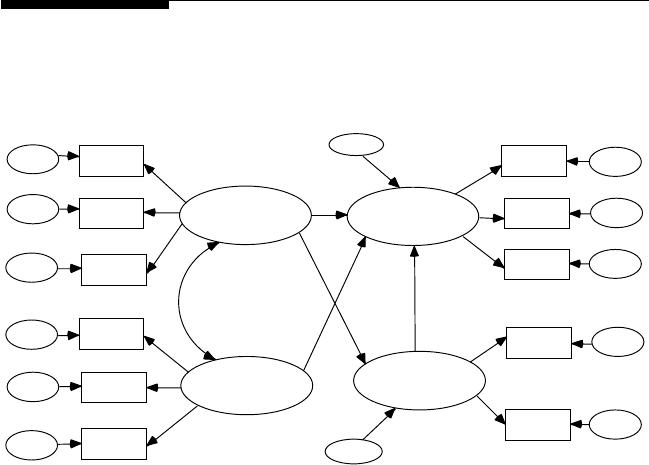

9 Developing Structural Equation Models: Part I.............................. 179

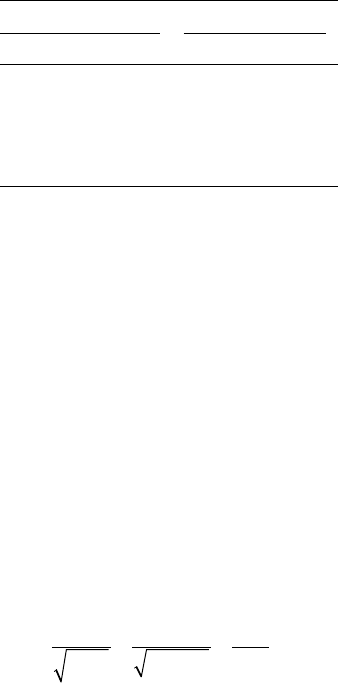

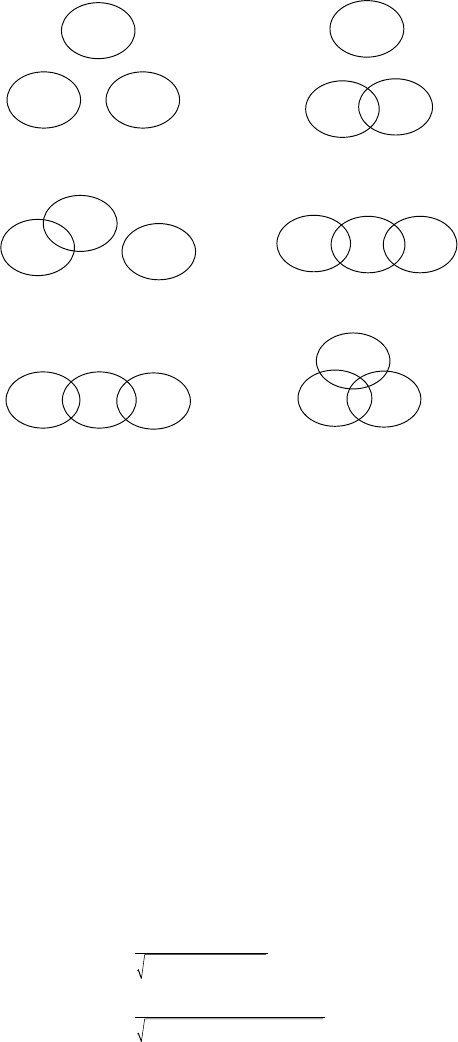

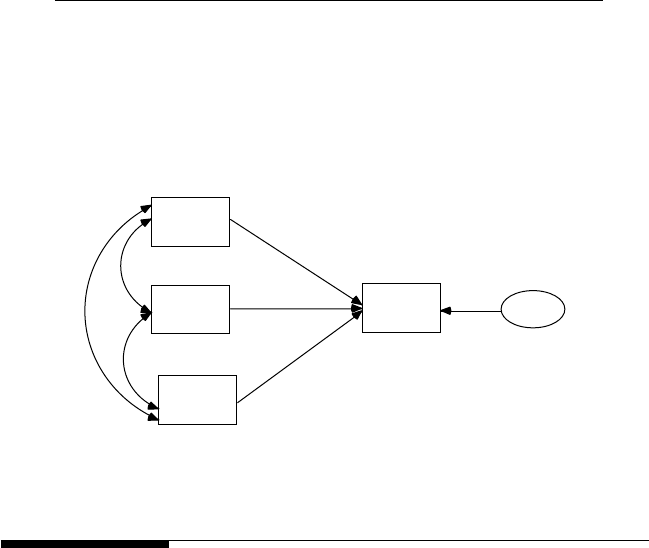

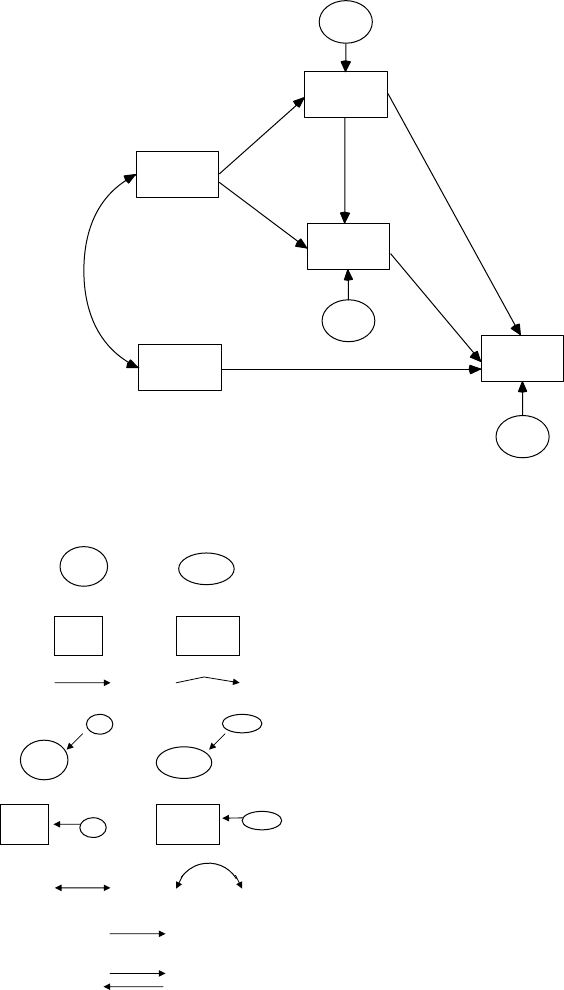

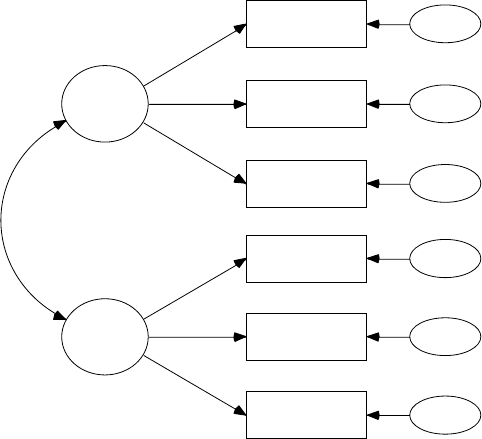

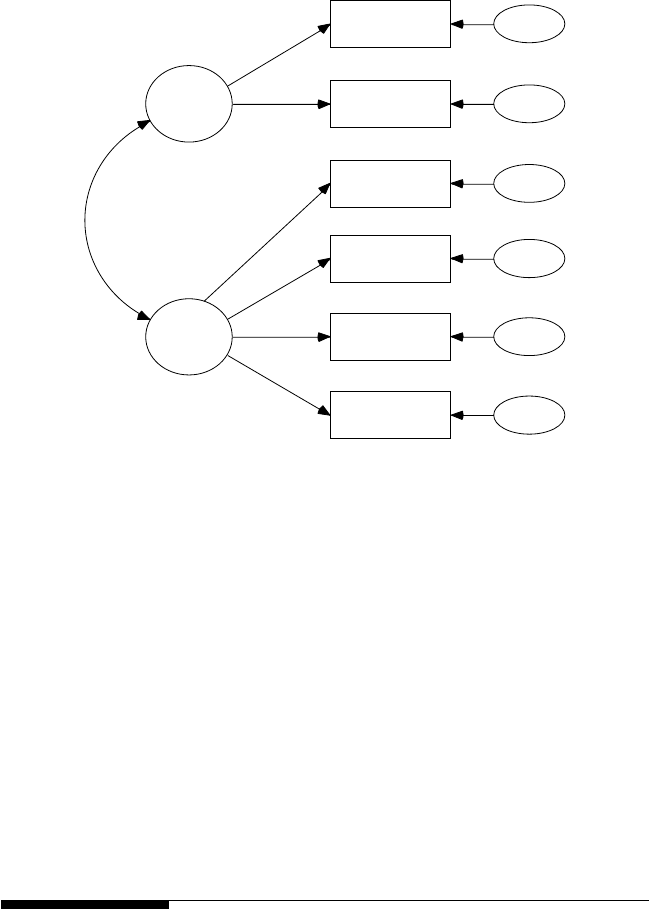

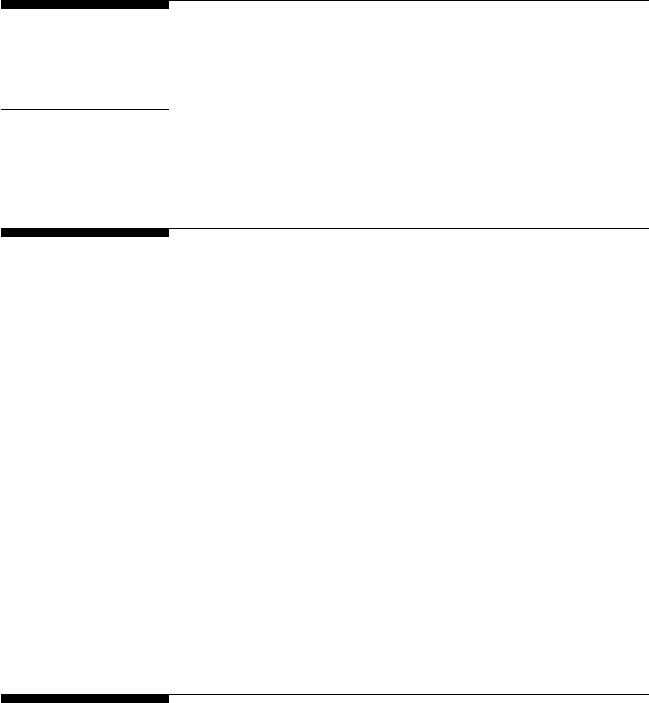

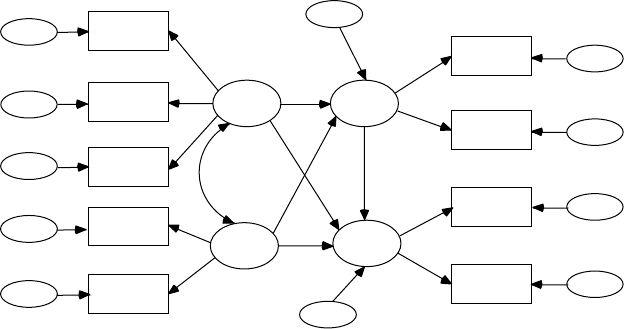

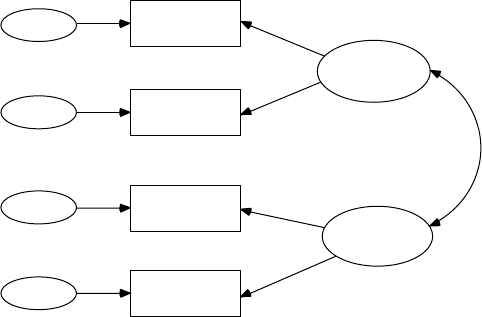

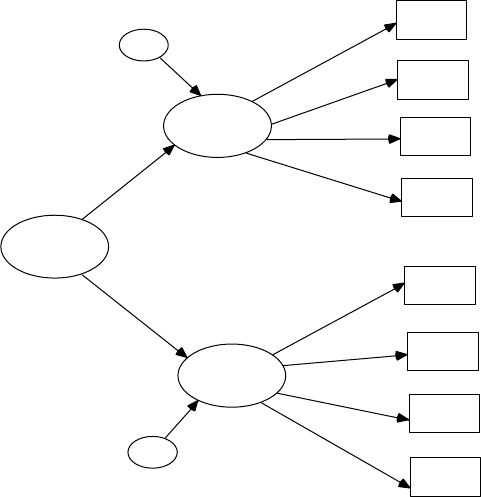

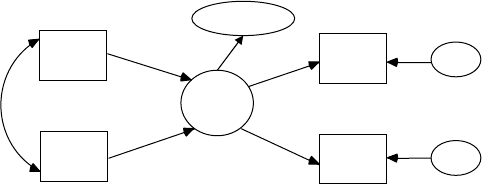

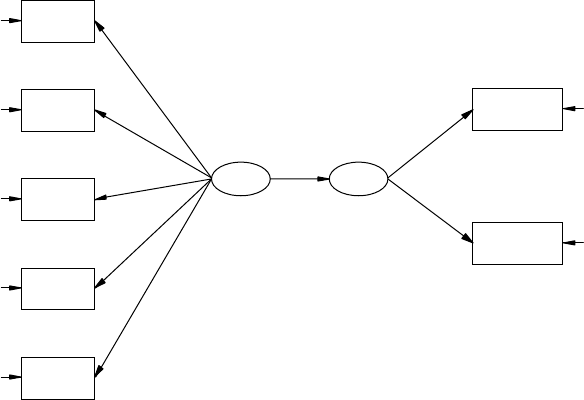

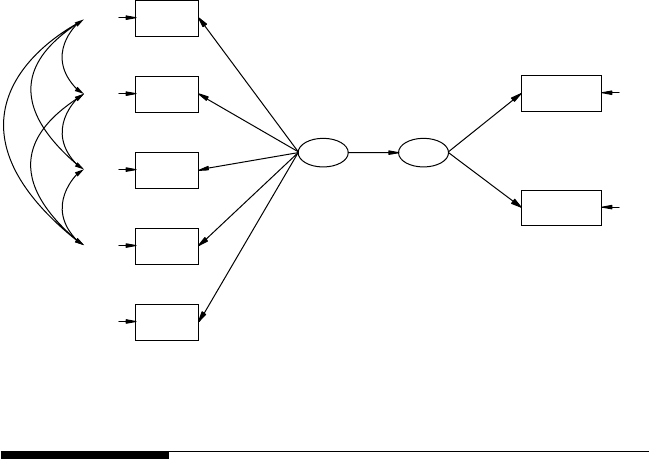

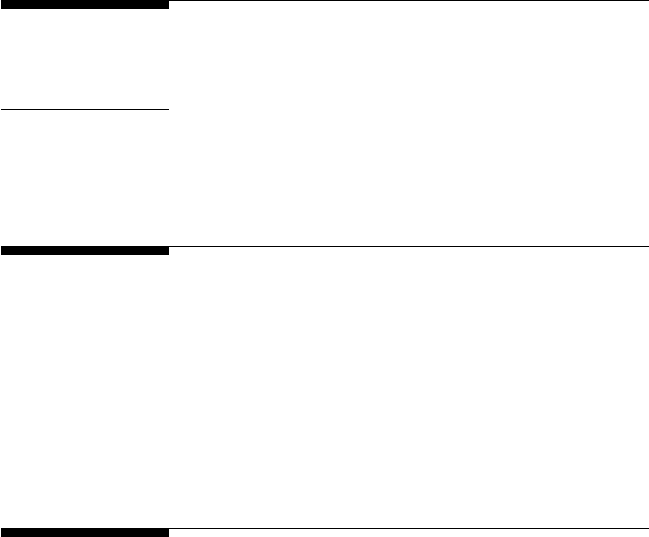

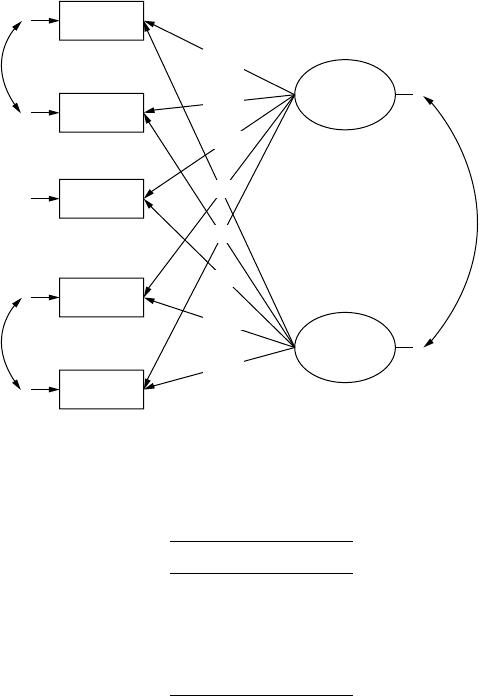

9.1 Observed Variables and Latent Variables ................................. 180

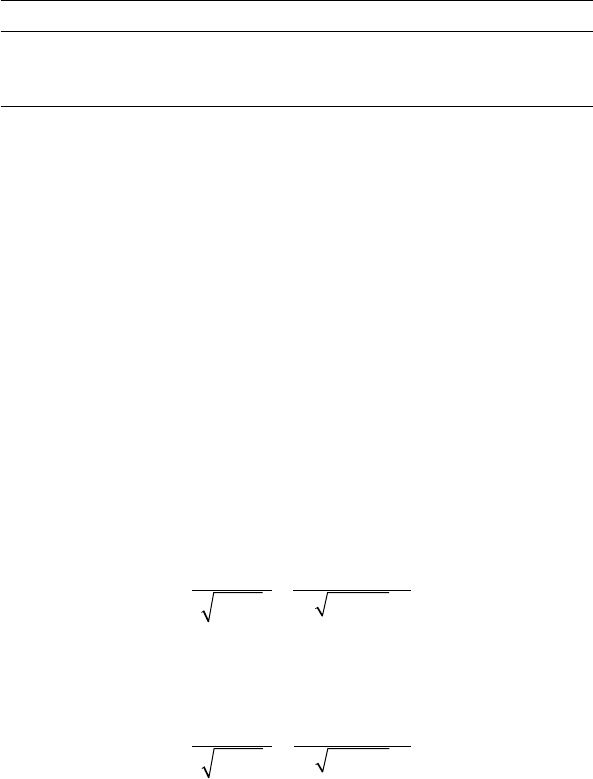

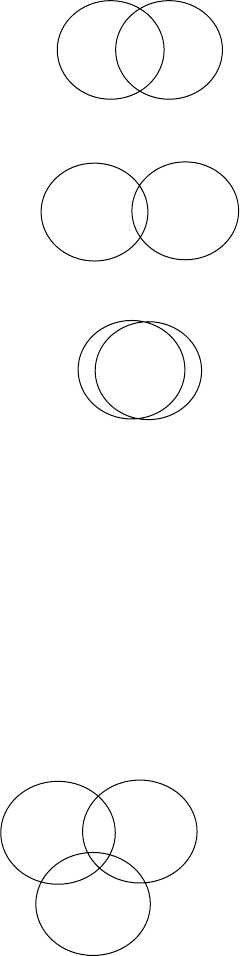

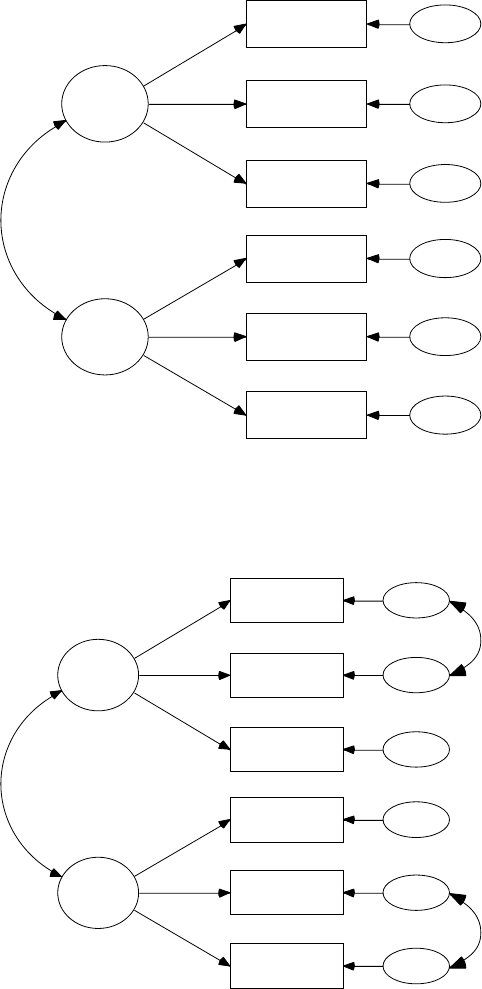

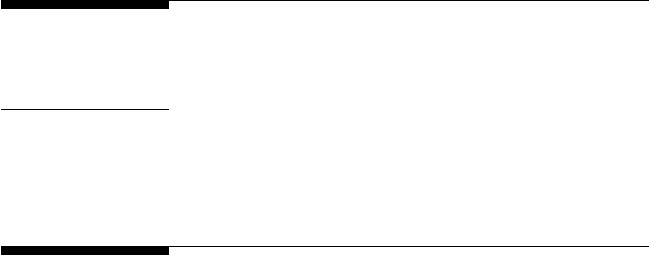

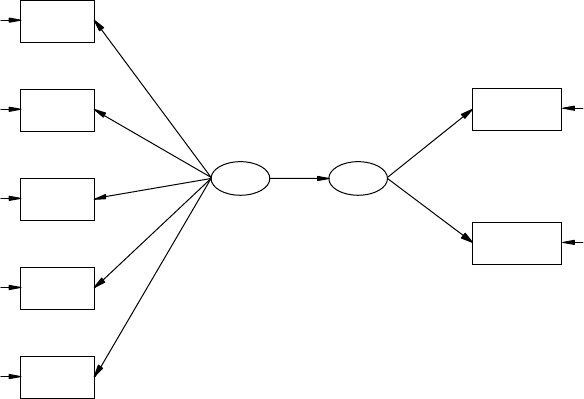

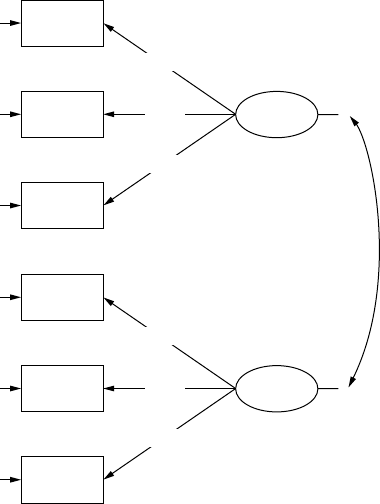

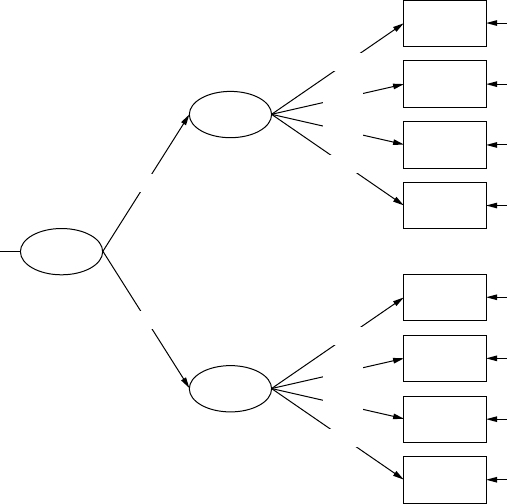

9.2 Measurement Model .................................................................... 184

Y102005.indb 9 4/3/10 4:25:17 PM

x Contents

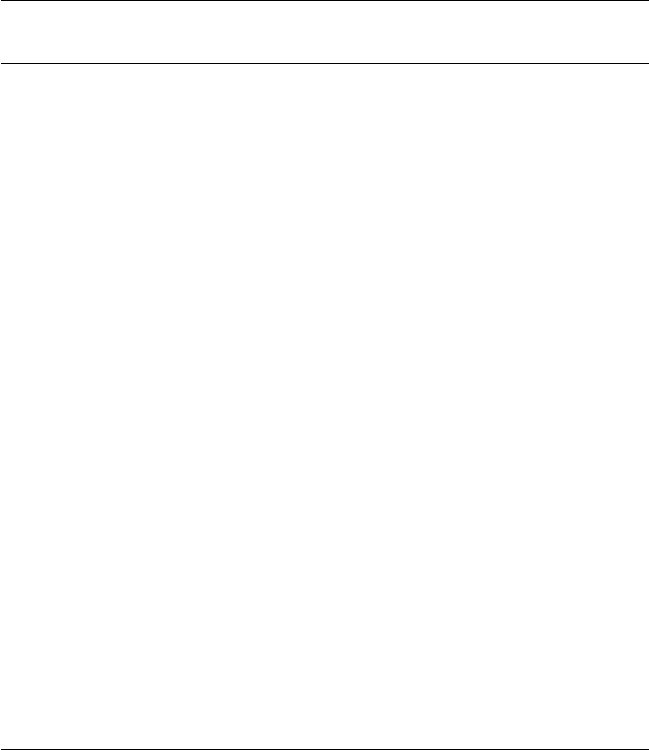

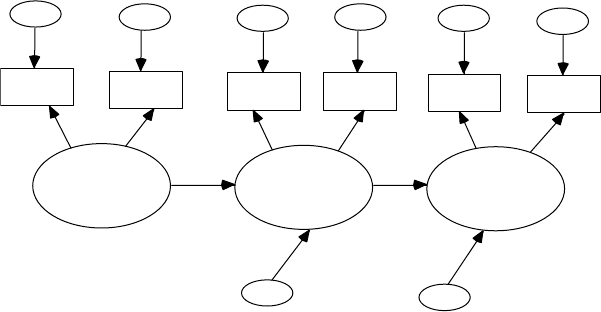

9.3 Structural Model .......................................................................... 186

9.4 Variances and Covariance Terms .............................................. 189

9.5 Two-Step/Four-Step Approach .................................................. 191

9.6 Summary ....................................................................................... 192

References ................................................................................................ 193

10 Developing Structural Equation Models: Part II ............................ 195

10.1 An Example ................................................................................... 195

10.2 Model Specication ...................................................................... 197

10.3 Model Identication ..................................................................... 200

10.4 Model Estimation ......................................................................... 202

10.5 Model Testing ............................................................................... 203

10.6 Model Modication ..................................................................... 205

10.7 Summary ....................................................................................... 207

Appendix: LISREL–SIMPLIS Structural Equation Model Program .....207

References ................................................................................................ 208

11 Reporting SEM Research: Guidelines and Recommendations ... 209

11.1 Data Preparation .......................................................................... 212

11.2 Model Specication ...................................................................... 213

11.3 Model Identication ..................................................................... 215

11.4 Model Estimation ..........................................................................216

11.5 Model Testing ............................................................................... 217

11.6 Model Modication ..................................................................... 218

11.7 Summary ....................................................................................... 219

References ................................................................................................ 220

12 Model Validation ................................................................................... 223

Key Concepts ........................................................................................... 223

12.1 Multiple Samples .......................................................................... 223

12.1.1 Model A Computer Output ...........................................226

12.1.2 Model B Computer Output ............................................ 227

12.1.3 Model C Computer Output ........................................... 228

12.1.4 Model D Computer Output ...........................................229

12.1.5 Summary .......................................................................... 229

12.2 Cross Validation ........................................................................... 229

12.2.1 ECVI .................................................................................. 230

12.2.2 CVI .................................................................................... 231

12.3 Bootstrap .......................................................................................234

12.3.1 PRELIS Graphical User Interface .................................. 234

12.3.2 LISREL and PRELIS Program Syntax .......................... 237

12.4 Summary ....................................................................................... 241

References ................................................................................................ 243

Y102005.indb 10 4/3/10 4:25:17 PM

Contents xi

13 Multiple Sample, Multiple Group, and Structured

Means Models ........................................................................................ 245

13.1 Multiple Sample Models ............................................................. 245

Sample 1 ........................................................................................ 247

Sample 2 ........................................................................................ 247

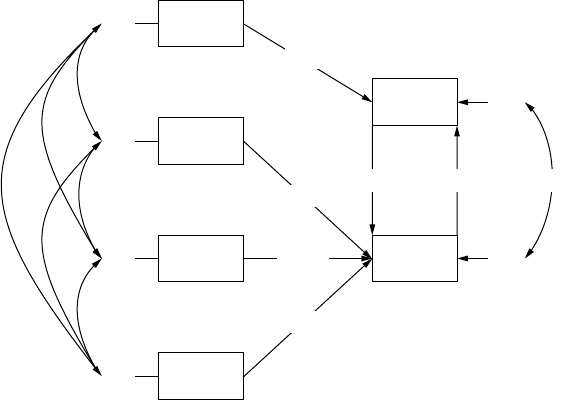

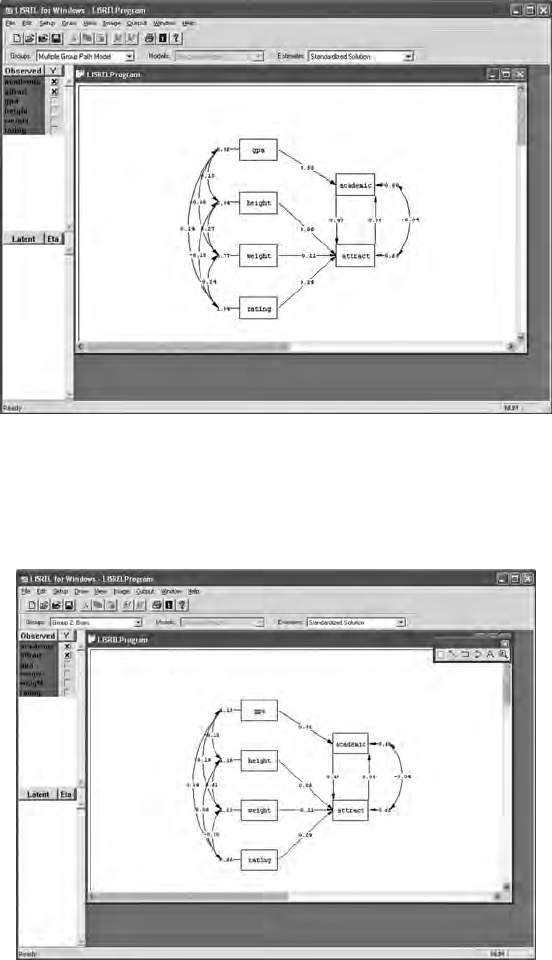

13.2 Multiple Group Models ...............................................................250

13.2.1 Separate Group Models .................................................. 251

13.2.2 Similar Group Model .....................................................255

13.2.3 Chi-Square Difference Test ............................................ 258

13.3 Structured Means Models .......................................................... 259

13.3.1 Model Specication and Identication ........................ 259

13.3.2 Model Fit .......................................................................... 261

13.3.3 Model Estimation and Testing ...................................... 261

13.4 Summary ....................................................................................... 263

Suggested Readings ................................................................................ 267

Multiple Samples ......................................................................... 267

Multiple Group Models .............................................................. 267

Structured Means Models ........................................................... 267

Chapter Footnote .................................................................................... 268

SPSS ................................................................................................ 268

References ................................................................................................ 269

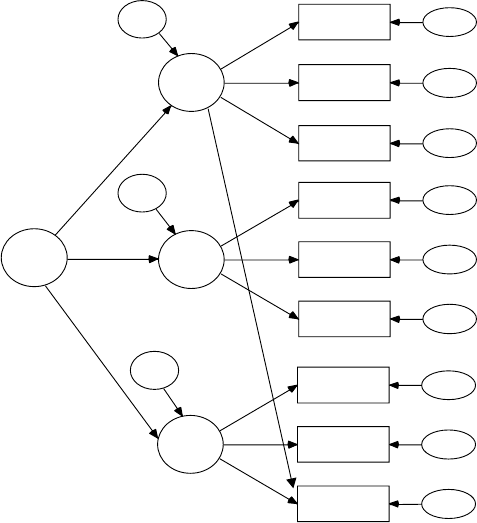

14 Second-Order, Dynamic, and Multitrait Multimethod Models .....271

14.1 Second-Order Factor Model ....................................................... 271

14.1.1 Model Specication and Identication ........................ 271

14.1.2 Model Estimation and Testing ...................................... 272

14.2 Dynamic Factor Model .................................................................274

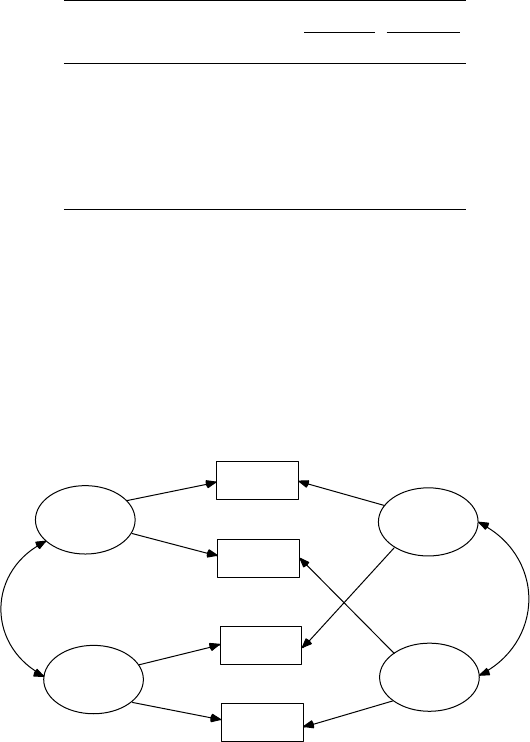

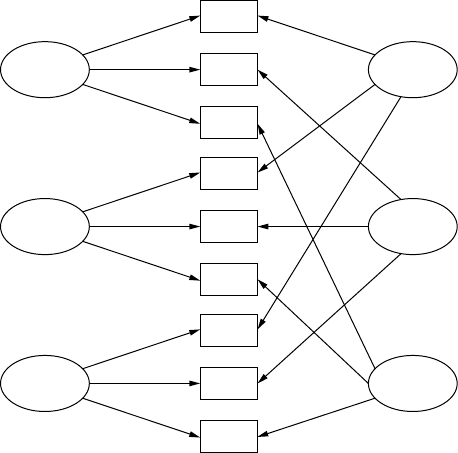

14.3 Multitrait Multimethod Model (MTMM) ................................. 277

14.3.1 Model Specication and Identication ........................ 279

14.3.2 Model Estimation and Testing ...................................... 280

14.3.3 Correlated Uniqueness Model ...................................... 281

14.4 Summary ....................................................................................... 286

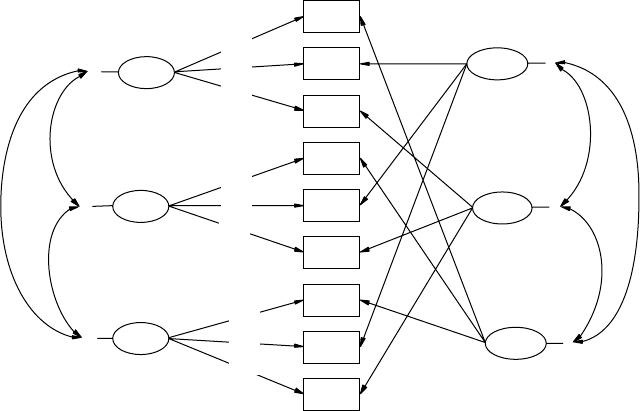

Suggested Readings ................................................................................ 290

Second-Order Factor Models ...................................................... 290

Dynamic Factor Models .............................................................. 290

Multitrait Multimethod Models ................................................. 290

Correlated Uniqueness Model ................................................... 291

References ................................................................................................ 291

15 Multiple Indicator–Multiple Indicator Cause, Mixture,

and Multilevel Models ......................................................................... 293

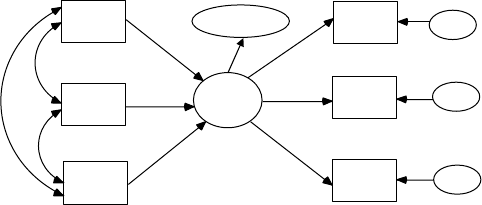

15.1 Multiple Indicator–Multiple Cause (MIMIC) Models ............. 293

15.1.1 Model Specication and Identication ........................ 294

15.1.2 Model Estimation and Model Testing .......................... 294

Y102005.indb 11 4/3/10 4:25:17 PM

xii Contents

15.1.3 Model Modication ........................................................ 297

Goodness-of-Fit Statistics .............................................. 297

Measurement Equations ................................................ 297

Structural Equations ....................................................... 298

15.2 Mixture Models ............................................................................ 298

15.2.1 Model Specication and Identication ........................ 299

15.2.2 Model Estimation and Testing ...................................... 301

15.2.3 Model Modication ........................................................ 302

15.2.4 Robust Statistic ................................................................305

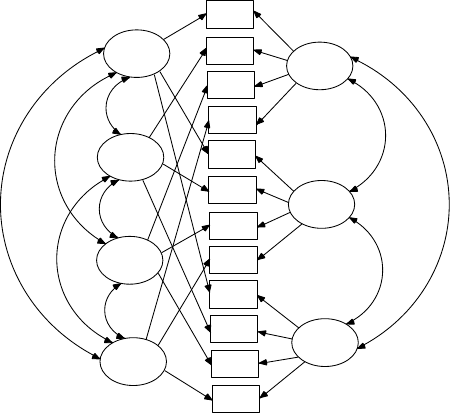

15.3 Multilevel Models ........................................................................ 307

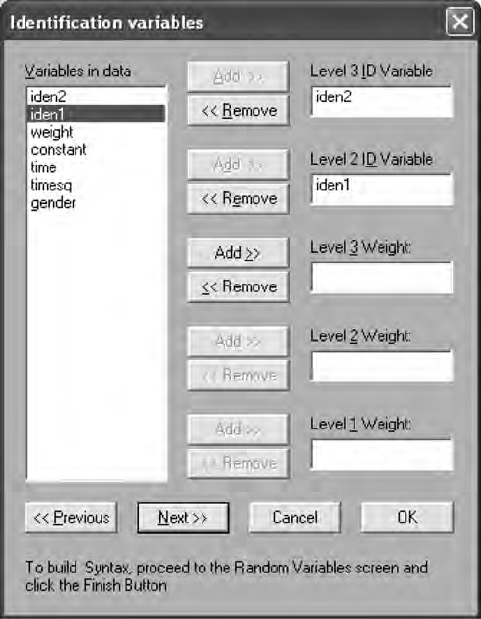

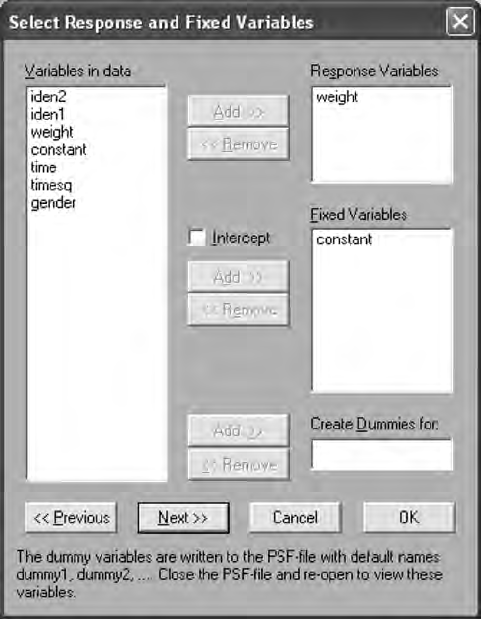

15.3.1 Constant Effects .............................................................. 313

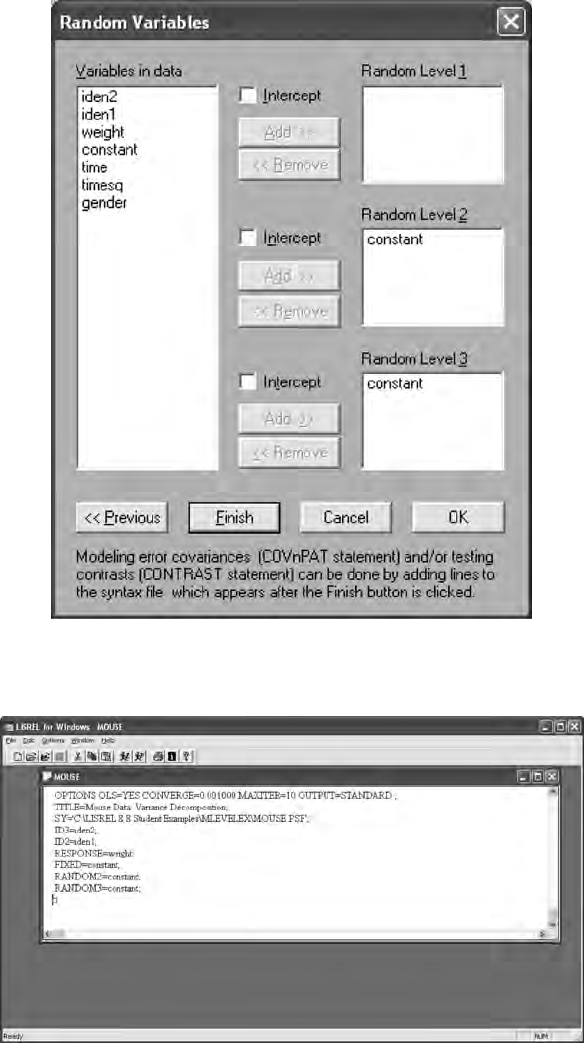

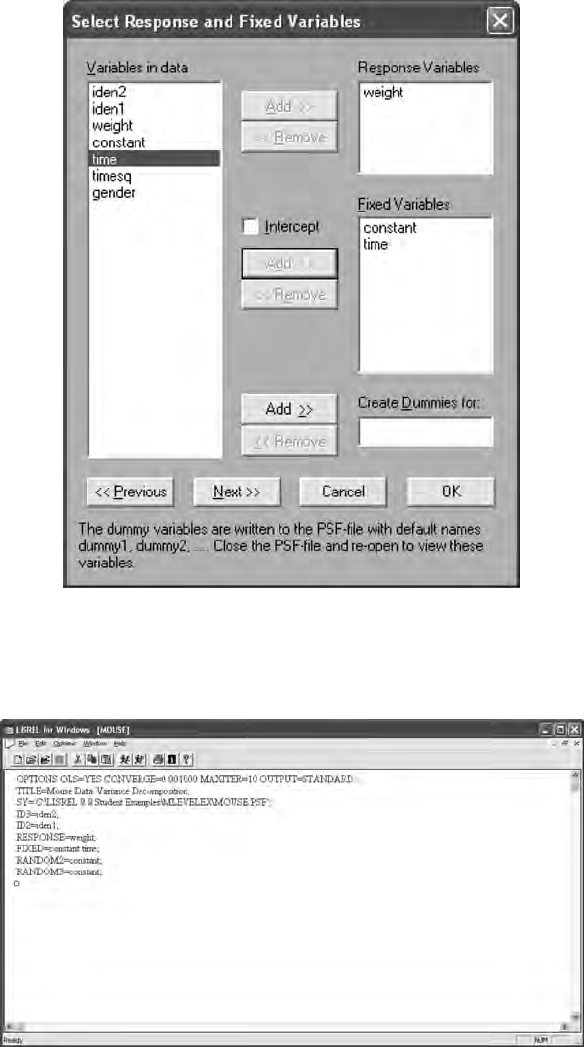

15.3.2 Time Effects ..................................................................... 313

15.3.3 Gender Effects ................................................................. 315

15.3.4 Multilevel Model Interpretation ....................................318

15.3.5 Intraclass Correlation ..................................................... 319

15.3.6 Deviance Statistic ............................................................ 320

15.4 Summary ....................................................................................... 320

Suggested Readings ................................................................................ 324

Multiple Indicator–Multiple Cause Models ............................. 324

Mixture Models ............................................................................ 325

Multilevel Models ........................................................................ 325

References ................................................................................................ 325

16 Interaction, Latent Growth, and Monte Carlo Methods ................ 327

16.1 Interaction Models ....................................................................... 327

16.1.1 Categorical Variable Approach ..................................... 328

16.1.2 Latent Variable Interaction Model ................................ 331

16.1.2.1 Computing Latent Variable Scores ............... 331

16.1.2.2 Computing Latent Interaction Variable ....... 333

16.1.2.3 Interaction Model Output ..............................335

16.1.2.4 Model Modication ......................................... 336

16.1.2.5 Structural Equations—No Latent

Interaction Variable ......................................... 336

16.1.3 Two-Stage Least Squares (TSLS) Approach ................ 337

16.2 Latent Growth Curve Models..................................................... 341

16.2.1 Latent Growth Curve Program ..................................... 343

16.2.2 Model Modication ........................................................344

16.3 Monte Carlo Methods ..................................................................345

16.3.1 PRELIS Simulation of Population Data........................ 346

16.3.2 Population Data from Specied

Covariance Matrix .......................................................... 352

16.3.2.1 SPSS Approach ................................................ 352

16.3.2.2 SAS Approach ..................................................354

16.3.2.3 LISREL Approach ............................................ 355

Y102005.indb 12 4/3/10 4:25:18 PM

Contents xiii

16.3.3 Covariance Matrix from Specied Model ................... 359

16.4 Summary ....................................................................................... 365

Suggested Readings ................................................................................ 368

Interaction Models ....................................................................... 368

Latent Growth-Curve Models .................................................... 368

Monte Carlo Methods .................................................................. 368

References ................................................................................................ 369

17 Matrix Approach to Structural Equation Modeling ....................... 373

17.1 General Overview of Matrix Notation ...................................... 373

17.2 Free, Fixed, and Constrained Parameters ................................. 379

17.3 LISREL Model Example in Matrix Notation ............................ 382

LISREL8 Matrix Program Output (Edited and Condensed)..385

17.4 Other Models in Matrix Notation ..............................................400

17.4.1 Path Model .......................................................................400

17.4.2 Multiple-Sample Model ................................................. 404

17.4.3 Structured Means Model ............................................... 405

17.4.4 Interaction Models .......................................................... 410

PRELIS Computer Output .......................................................... 412

LISREL Interaction Computer Output .......................................416

17.5 Summary ....................................................................................... 421

References ................................................................................................ 423

Appendix A: Introduction to Matrix Operations ...................................425

Appendix B: Statistical Tables ...................................................................439

Answers to Selected Exercises ................................................................... 449

Author Index .................................................................................................. 489

Subject Index ................................................................................................. 495

Y102005.indb 13 4/3/10 4:25:18 PM

xv

About the Authors

RANDALL E. SCHUMACKER received his Ph.D. in educational psychol-

ogy from Southern Illinois University. He is currently professor of educa-

tional research at the University of Alabama, where he teaches courses

in structural equation modeling, multivariate statistics, multiple regres-

sion, and program evaluation. His research interests are varied, including

modeling interaction in SEM, robust statistics (normal scores, centering,

and variance ination factor issues), and SEM specication search issues

as well as measurement model issues related to estimation, mixed-item

formats, and reliability.

He has published in several journals including Academic Medicine,

Educational and Psychological Measurement, Journal of Applied Measurement,

Journal of Educational and Behavioral Statistics, Journal of Research Methodology,

Multiple Linear Regression Viewpoints, and Structural Equation Modeling.

He has served on the editorial boards of numerous journals and is a

member of the American Educational Research Association, American

Psychological Association—Division 5, as well as past-president of the

Southwest Educational Research Association, and emeritus editor of

Structural Equation Modeling journal. He can be contacted at the University

of Alabama College of Education.

RICHARD G. LOMAX received his Ph.D. in educational research meth-

odology from the University of Pittsburgh. He is currently a professor in

the School of Educational Policy and Leadership, Ohio State University,

where he teaches courses in structural equation modeling, statistics, and

quantitative research methodology.

His research primarily focuses on models of literacy acquisition, multi-

variate statistics, and assessment. He has published in such diverse jour-

nals as Parenting: Science and Practice, Understanding Statistics: Statistical

Issues in Psychology, Education, and the Social Sciences, Violence Against

Women, Journal of Early Adolescence, and Journal of Negro Education. He has

served on the editorial boards of numerous journals, and is a member of

the American Educational Research Association, the American Statistical

Association, and the National Reading Conference. He can be contacted at

Ohio State University College of Education and Human Ecology.

Y102005.indb 15 4/3/10 4:25:18 PM

xvii

Preface

Approach

This book presents a basic introduction to structural equation modeling

(SEM). Readers will nd that we have kept to our tradition of keeping

examples rudimentary and easy to follow. The reader is provided with

a review of correlation and covariance, followed by multiple regression,

path, and factor analyses in order to better understand the building blocks

of SEM. The book describes a basic structural equation model followed by

the presentation of several different types of structural equation models.

Our approach in the text is both conceptual and application oriented.

Each chapter covers basic concepts, principles, and practice and then

utilizes SEM software to provide meaningful examples. Each chapter also

features an outline, key concepts, a summary, numerous examples from

a variety of disciplines, tables, and gures, including path diagrams, to

assist with conceptual understanding. Chapters with examples follow the

conceptual sequence of SEM steps known as model specication, identi-

cation, estimation, testing, and modication.

The book now uses LISREL 8.8 student version to make the software and

examples readily available to readers. Please be aware that the student

version, although free, does not contain all of the functional features as a

full licensed version. Given the advances in SEM software over the past

decade, you should expect updates and patches of this software package

and therefore become familiar with any new features as well as explore the

excellent library of examples and help materials. The LISREL 8.8 student

version is an easy-to-use Windows PC based program with pull-down

menus, dialog boxes, and drawing tools. To access the program, and/or

if you’re a Mac user and are interested in learning about Mac availability,

please check with Scientic Software (http://www.ssicentral.com). There

is also a hotlink to the Scientic Software site from the book page for A

Beginner’s Guide to Structural Equation Modeling, 3rd edition on the Textbook

Resources tab at www.psypress.com.

The SEM model examples in the book do not require complicated pro-

gramming skills nor does the reader need an advanced understanding of

statistics and matrix algebra to understand the model applications. We have

provided a chapter on the matrix approach to SEM as well as an appendix

on matrix operations for the interested reader. We encourage the under-

standing of the matrices used in SEM models, especially for some of the

more advanced SEM models you will encounter in the research literature.

Y102005.indb 17 4/3/10 4:25:18 PM

xviii Preface

Goals and Content Coverage

Our main goal in this third edition is for students and researchers to be

able to conduct their own SEM model analyses, as well as be able to under-

stand and critique published SEM research. These goals are supported by

the conceptual and applied examples contained in the book and several

journal article references for each advanced SEM model type. We have

also included a SEM checklist to guide your model analysis according to

the basic steps a researcher takes.

As for content coverage, the book begins with an introduction to SEM

(what it is, some history, why conduct it, and what software is available),

followed by chapters on data entry and editing issues, and correlation.

These early chapters are critical to understanding how missing data, non-

normality, scale of measurement, non-linearity, outliers, and restriction of

range in scores affects SEM analysis. Chapter 4 lays out the basic steps of

model specication, identication, estimation, testing, and modication,

followed by Chapter 5, which covers issues related to model t indices,

power and sample size. Chapters 6 through 10 follow the basic SEM steps

of modeling, with actual examples from different disciplines, using regres-

sion, path, conrmatory factor and structural equation models. Logically

the next chapter presents information about reporting SEM research and

includes a SEM checklist to guide decision-making. Chapter 12 presents

different approaches to model validation, an important nal step after

obtaining an acceptable theoretical model. Chapters 13 through 16 provide

SEM examples that introduce many of the different types of SEM model

applications. The nal chapter describes the matrix approach to structural

equation modeling by using examples from the previous chapters.

Theoretical models are present in every discipline, and therefore can be

formulated and tested. This third edition expands SEM models and appli-

cations to provide the students and researchers in medicine, political sci-

ence, sociology, education, psychology, business, and the biological sciences

the basic concepts, principles, and practice necessary to test their theoreti-

cal models. We hope you become more familiar with structural equation

modeling after reading the book, and use SEM in your own research.

New to the Third Edition

The rst edition of this book was one of the rst books published on SEM,

while the second edition greatly expanded knowledge of advanced SEM

models. Since that time, we have had considerable experience utilizing the

Y102005.indb 18 4/3/10 4:25:18 PM

Preface xix

book in class with our students. As a result of those experiences, the third

edition represents a more useable book for teaching SEM. As such it is an

ideal text for introductory graduate level courses in structural equation

modeling or factor analysis taught in departments of psychology, educa-

tion, business, and other social and healthcare sciences. An understand-

ing of correlation is assumed.

The third edition offers several new surprises, namely:

1. Our instruction and examples are now based on freely available

software: LISREL 8.8 student version.

2. More examples presented from more disciplines, including input,

output, and screenshots.

3. Every chapter has been updated and enhanced with additional

material.

4. A website with raw data sets for the book’s examples and exer-

cises so they can be used with any SEM program, all of the book’s

exercises, hotlinks to related websites, and answers to all of the

exercises for instructors only. To access the website visit the book

page or the Textbook Resource page at www.psypress.com.

5. Expanded coverage of advanced models with more on multiple-

group, multi-level, and mixture modeling (Chs. 13 and 15), second-

order and dynamic factor models (Ch. 14), and Monte Carlo

methods (Ch. 16).

6. Increased coverage of sample size and power (Ch. 5), including

software programs, and reporting research (Ch. 11).

7. New journal article references help readers better understand

published research (Chs. 13–17).

8. Troubleshooting tips on how to address the most frequently

encountered problems are found in Chapters 3 and 11.

9. Chapters 13 to 16 now include additional SEM model examples.

10. 25% new exercises with answers to half in the back of the book

for student review (and answers to all for instructors only on the

book and/or Textbook Resource page at www.psypress.com).

11. Added Matrix examples for several models in Chapter 17.

12. Updated references in all chapters on all key topics.

Overall, we believe this third edition is a more complete book that can

be used to teach a full course in SEM. The past several years have seen an

explosion in SEM coursework, books, websites, and training courses. We

are proud to have been considered a starting point for many beginner’s

to SEM. We hope you nd that this third edition expands on many of the

programming tools, trends and topics in SEM today.

Y102005.indb 19 4/3/10 4:25:18 PM

xx Preface

Acknowledgments

The third edition of this book represents more than thirty years of inter-

acting with our colleagues and students who use structural equation

modeling. As before, we are most grateful to the pioneers in the eld of

structural equation modeling, particularly to Karl Jöreskog, Dag Sörbom,

Peter Bentler, James Arbuckle, and Linda and Bengt Muthèn. These indi-

viduals have developed and shaped the new advances in the SEM eld as

well as the content of this book, plus provided SEM researchers with soft-

ware programs. We are also grateful to Gerhard Mels who answered our

questions and inquiries about SEM programming problems in the chap-

ters. We also wish to thank the reviewers: James Leeper, The University

of Alabama, Philip Smith, Augusta State University, Phil Wood, the

University of Missouri–Columbia, and Ke-Haie Yuan, the University of

Notre Dame.

This book was made possible through the encouragement of Debra

Riegert at Routledge/Taylor & Francis who insisted it was time for a third

edition. We wish to thank her and her editorial assistant, Erin M. Flaherty,

for coordinating all of the activity required to get a book into print. We

also want to thank Suzanne Lassandro at Taylor & Francis Group, LLC

for helping us through the difcult process of revisions, galleys, and nal

book copy.

Randall E. Schumacker

The University of Alabama

Richard G. Lomax

The Ohio State University

Y102005.indb 20 4/3/10 4:25:18 PM

1

1

Introduction

Key Concepts

Latent and observed variables

Independent and dependent variables

Types of models

Regression

Path

Conrmatory factor

Structural equation

History of structural equation modeling

Structural equation modeling software programs

Structural equation modeling can be easily understood if the researcher

has a grounding in basic statistics, correlation, and regression analysis.

The rst three chapters provide a brief introduction to structural equation

modeling (SEM), basic data entry, and editing issues in statistics, and con-

cepts related to the use of correlation coefcients in structural equation

modeling. Chapter 4 covers the essential concepts of SEM: model speci-

cation, identication, estimation, testing, and modication. This basic

understanding provides the framework for understanding the material

presented in chapters 5 through 8 on model-t indices, regression analy-

sis, path analysis, and conrmatory factor analysis models (measurement

models), which form the basis for understanding the structural equation

models (latent variable models) presented in chapters 9 and 10. Chapter 11

provides guidance on reporting structural equation modeling research.

Chapter 12 addresses techniques used to establish model validity and

generalization of ndings. Chapters 13 to 16 present many of the advanced

SEM models currently appearing in journal articles: multiple group, mul-

tiple indicators–multiple causes, mixture, multilevel, structured means,

multitrait–multimethod, second-order factor, dynamic factor, interaction

Y102005.indb 1 3/22/10 3:24:44 PM

2 A Beginner’s Guide to Structural Equation Modeling

models, latent growth curve models, and Monte Carlo studies. Chapter 17

presents matrix notation for one of our SEM applications, covers the differ-

ent matrices used in structural equation modeling, and presents multiple

regression and path analysis solutions using matrix algebra. We include

an introduction to matrix operations in the Appendix for readers who

want a more mathematical understanding of matrix operations. To start

our journey of understanding, we rst ask, What is structural equation

modeling? Then, we give a brief history of SEM, discuss the importance of

SEM, and note the availability of SEM software programs.

1.1 What Is Structural Equation Modeling?

Structural equation modeling (SEM) uses various types of models to

depict relationships among observed variables, with the same basic goal

of providing a quantitative test of a theoretical model hypothesized by

the researcher. More specically, various theoretical models can be tested

in SEM that hypothesize how sets of variables dene constructs and

how these constructs are related to each other. For example, an educa-

tional researcher might hypothesize that a student’s home environment

inuences her later achievement in school. A marketing researcher may

hypothesize that consumer trust in a corporation leads to increased prod-

uct sales for that corporation. A health care professional might believe

that a good diet and regular exercise reduce the risk of a heart attack.

In each example, the researcher believes, based on theory and empirical

research, sets of variables dene the constructs that are hypothesized to be

related in a certain way. The goal of SEM analysis is to determine the extent to

which the theoretical model is supported by sample data. If the sample data

support the theoretical model, then more complex theoretical models can be

hypothesized. If the sample data do not support the theoretical model, then

either the original model can be modied and tested, or other theoretical

models need to be developed and tested. Consequently, SEM tests theoreti-

cal models using the scientic method of hypothesis testing to advance our

understanding of the complex relationships among constructs.

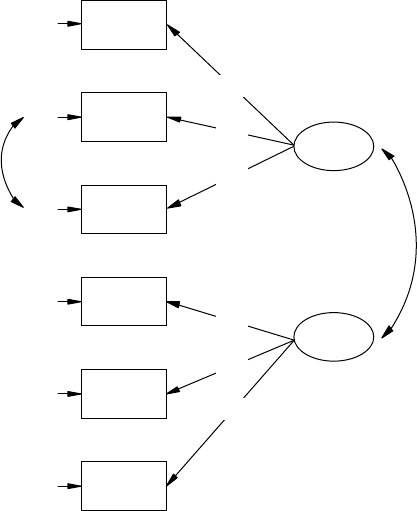

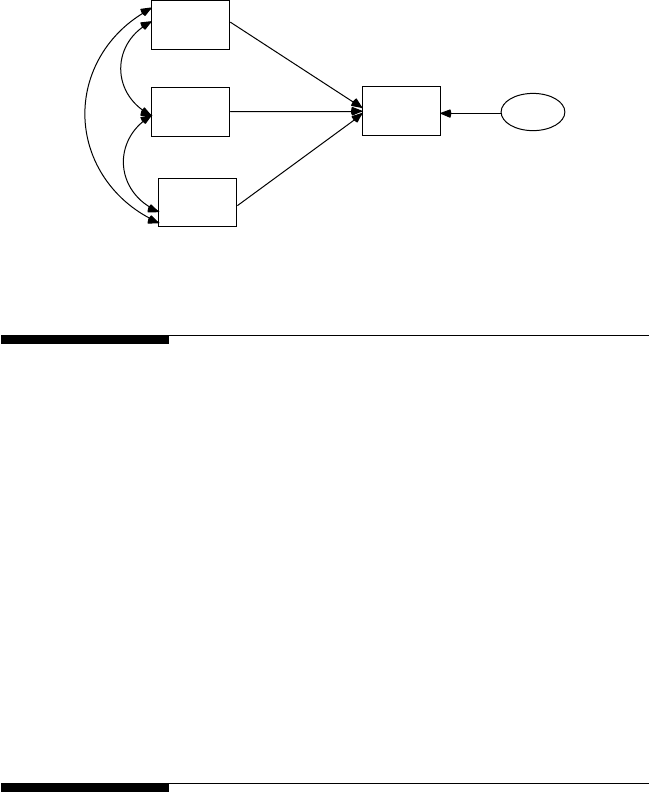

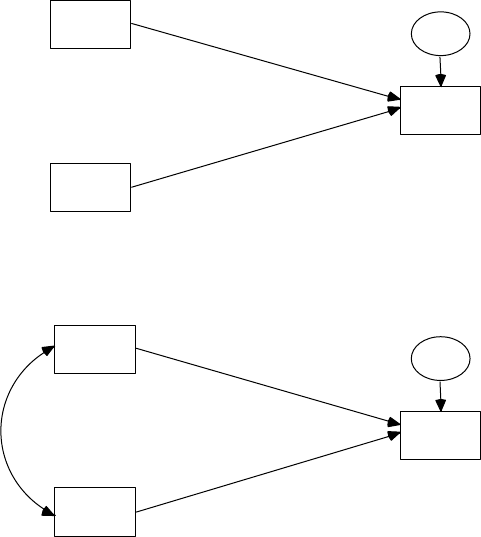

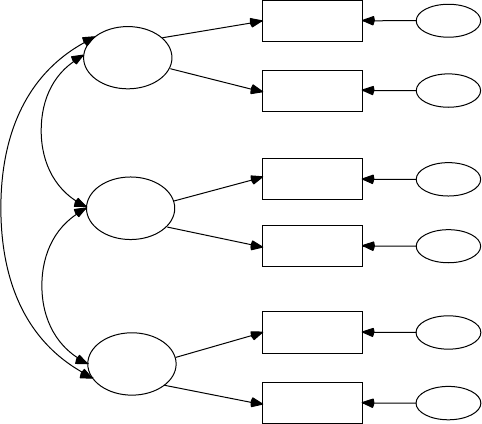

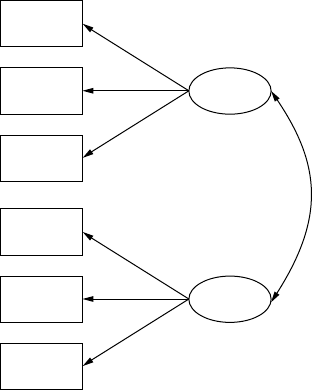

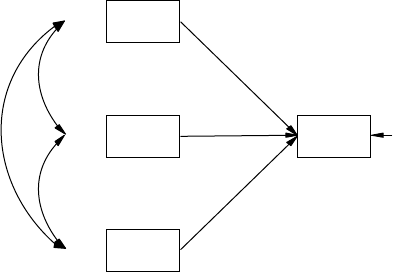

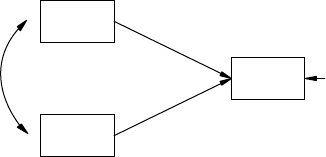

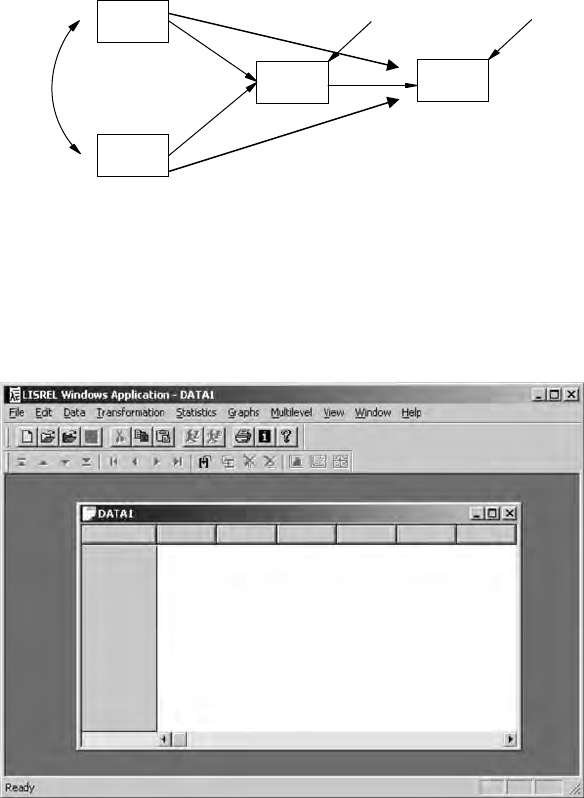

SEM can test various types of theoretical models. Basic models include

regression (chapter 6), path (chapter 7), and conrmatory factor (chap-

ter 8) models. Our reason for covering these basic models is that they

provide a basis for understanding structural equation models (chapters

9 and 10). To better understand these basic models, we need to dene a

few terms. First, there are two major types of variables: latent variables

and observed variables. Latent variables (constructs or factors) are vari-

ables that are not directly observable or measured. Latent variables are

Y102005.indb 2 3/22/10 3:24:44 PM

Introduction 3

indirectly observed or measured, and hence are inferred from a set of

observed variables that we actually measure using tests, surveys, and

so on. For example, intelligence is a latent variable that represents a psy-

chological construct. The condence of consumers in American business

is another latent variable, one representing an economic construct. The

physical condition of adults is a third latent variable, one representing a

health-related construct.

The observed, measured, or indicator variables are a set of variables that

we use to dene or infer the latent variable or construct. For example, the

Wechsler Intelligence Scale for Children—Revised (WISC-R) is an instru-

ment that produces a measured variable (scores), which one uses to infer

the construct of a child’s intelligence. Additional indicator variables, that

is, intelligence tests, could be used to indicate or dene the construct of

intelligence (latent variable). The Dow-Jones index is a standard measure

of the American corporate economy construct. Other measured variables

might include gross national product, retail sales, or export sales. Blood

pressure is one of many health-related variables that could indicate a

latent variable dened as “tness.” Each of these observed or indicator

variables represent one denition of the latent variable. Researchers use

sets of indicator variables to dene a latent variable; thus, other measure-

ment instruments are used to obtain indicator variables, for example, the

Stanford–Binet Intelligence Scale, the NASDAQ index, and an individual’s

cholesterol level, respectively.

Variables, whether they are observed or latent, can also be dened

as either independent variables or dependent variables. An independent

variable is a variable that is not inuenced by any other variable in

the model. A dependent variable is a variable that is inuenced by

another variable in the model. Let us return to the previous examples

and specify the independent and dependent variables. The educational

researcher hypothesizes that a student’s home environment (indepen-

dent latent variable) inuences school achievement (dependent latent

variable). The marketing researcher believes that consumer trust in a

corporation (independent latent variable) leads to increased product

sales (dependent latent variable). The health care professional wants to

determine whether a good diet and regular exercise (two independent

latent variables) inuence the frequency of heart attacks (dependent

latent variable).

The basic SEM models in chapters 6 through 8 illustrate the use of

observed variables and latent variables when dened as independent

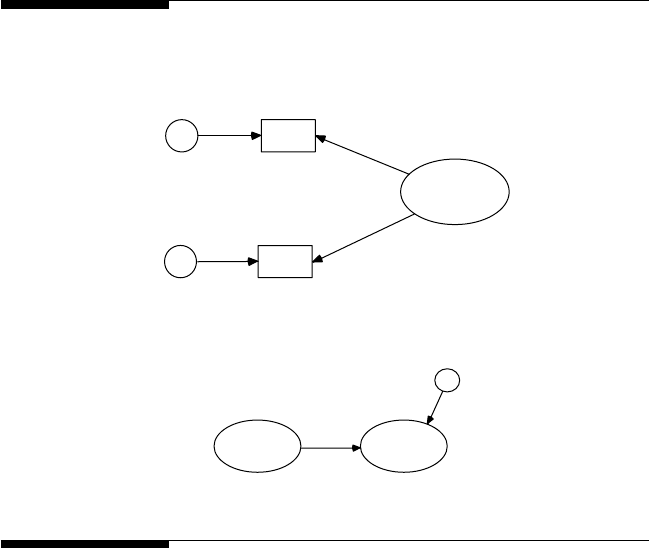

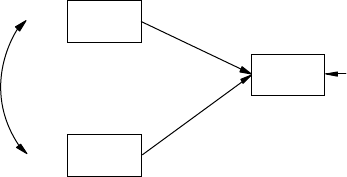

or dependent. A regression model consists solely of observed variables

where a single dependent observed variable is predicted or explained by

one or more independent observed variables; for example, a parent’s edu-

cation level (independent observed variable) is used to predict his or her

child’s achievement score (dependent observed variable). A path model is

Y102005.indb 3 3/22/10 3:24:44 PM

4 A Beginner’s Guide to Structural Equation Modeling

also specied entirely with observed variables, but the exibility allows

for multiple independent observed variables and multiple dependent

observed variables—for example, export sales, gross national product,

and NASDAQ index inuence consumer trust and consumer spending

(dependent observed variables). Path models, therefore, test more com-

plex models than regression models. Conrmatory factor models con-

sist of observed variables that are hypothesized to measure one or more

latent variables (independent or dependent); for example, diet, exercise,

and physiology are observed measures of the independent latent variable

“tness.” An understanding of these basic models will help in under-

standing structural equation modeling, which combines path and factor

analytic models. Structural equation models consist of observed variables

and latent variables, whether independent or dependent; for example, an

independent latent variable (home environment) inuences a dependent

latent variable (achievement), where both types of latent variables are

measured, dened, or inferred by multiple observed or measured indica-

tor variables.

1.2 History of Structural Equation Modeling

To discuss the history of structural equation modeling, we explain the fol-

lowing four types of related models and their chronological order of devel-

opment: regression, path, conrmatory factor, and structural equation

models.

The rst model involves linear regression models that use a correlation

coefcient and the least squares criterion to compute regression weights.

Regression models were made possible because Karl Pearson created a

formula for the correlation coefcient in 1896 that provides an index for

the relationship between two variables (Pearson, 1938). The regression

model permits the prediction of dependent observed variable scores

(Y scores), given a linear weighting of a set of independent observed

scores (X scores) that minimizes the sum of squared residual error val-

ues. The mathematical basis for the linear regression model is found in

basic algebra. Regression analysis provides a test of a theoretical model

that may be useful for prediction (e.g., admission to graduate school or

budget projections). In an example study, regression analysis was used

to predict student exam scores in statistics (dependent variable) from a

series of collaborative learning group assignments (independent vari-

ables; Delucchi, 2006). The results provided some support for collabora-

tive learning groups improving statistics exam performance, although

not for all tasks.

Y102005.indb 4 3/22/10 3:24:44 PM

Introduction 5

Some years later, Charles Spearman (1904, 1927) used the correlation

coefcient to determine which items correlated or went together to create

the factor model. His basic idea was that if a set of items correlated or

went together, individual responses to the set of items could be summed

to yield a score that would measure, dene, or infer a construct. Spearman

was the rst to use the term factor analysis in dening a two-factor con-

struct for a theory of intelligence. D.N. Lawley and L.L. Thurstone in 1940

further developed applications of factor models, and proposed instru-

ments (sets of items) that yielded observed scores from which constructs

could be inferred. Most of the aptitude, achievement, and diagnostic

tests, surveys, and inventories in use today were created using factor ana-

lytic techniques. The term conrmatory factor analysis (CFA) is used today

based in part on earlier work by Howe (1955), Anderson and Rubin (1956),

and Lawley (1958). The CFA method was more fully developed by Karl

Jöreskog in the 1960s to test whether a set of items dened a construct.

Jöreskog completed his dissertation in 1963, published the rst article on

CFA in 1969, and subsequently helped develop the rst CFA software pro-

gram. Factor analysis has been used for over 100 years to create measure-

ment instruments in many academic disciplines, while today CFA is used

to test the existence of these theoretical constructs. In an example study,

CFA was used to conrm the “Big Five” model of personality by Goldberg

(1990). The ve-factor model of extraversion, agreeableness, conscientious-

ness, neuroticism, and intellect was conrmed through the use of multiple

indicator variables for each of the ve hypothesized factors.

Sewell Wright (1918, 1921, 1934), a biologist, developed the third type of

model, a path model. Path models use correlation coefcients and regres-

sion analysis to model more complex relationships among observed

variables. The rst applications of path analysis dealt with models of

animal behavior. Unfortunately, path analysis was largely overlooked

until econometricians reconsidered it in the 1950s as a form of simultane-

ous equation modeling (e.g., H. Wold) and sociologists rediscovered it in

the 1960s (e.g., O. D. Duncan and H. M. Blalock). In many respects, path

analysis involves solving a set of simultaneous regression equations that

theoretically establish the relationship among the observed variables in

the path model. In an example path analysis study, Walberg’s theoretical

model of educational productivity was tested for fth- through eighth-

grade students (Parkerson et al., 1984). The relations among the follow-

ing variables were analyzed in a single model: home environment, peer

group, media, ability, social environment, time on task, motivation, and

instructional strategies. All of the hypothesized paths among those vari-

ables were shown to be statistically signicant, providing support for the

educational productivity model.

The nal model type is structural equation modeling (SEM). SEM mod-

els essentially combine path models and conrmatory factor models;

Y102005.indb 5 3/22/10 3:24:44 PM

6 A Beginner’s Guide to Structural Equation Modeling

that is, SEM models incorporate both latent and observed variables. The

early development of SEM models was due to Karl Jöreskog (1969, 1973),

Ward Keesling (1972), and David Wiley (1973); this approach was initially

known as the JKW model, but became known as the linear structural rela-

tions model (LISREL) with the development of the rst software program,

LISREL, in 1973. Since then, many SEM articles have been published; for

example, Shumow and Lomax (2002) tested a theoretical model of paren-

tal efcacy for adolescent students. For the overall sample, neighborhood

quality predicted parental efcacy, which predicted parental involvement

and monitoring, both of which predicted academic and social-emotional

adjustment.

Jöreskog and van Thillo originally developed the LISREL software pro-

gram at the Educational Testing Service (ETS) using a matrix command

language (i.e., involving Greek and matrix notation), which is described

in chapter 17. The rst publicly available version, LISREL III, was released

in 1976. Later in 1993, LISREL8 was released; it introduced the SIMPLIS

(SIMPle LISrel) command language in which equations are written

using variable names. In 1999, the rst interactive version of LISREL was

released. LISREL8 introduced the dialog box interface using pull-down

menus and point-and-click features to develop models, and the path dia-

gram mode, a drawing program to develop models. Karl Jöreskog was rec-

ognized by Cudeck, DuToit, and Sörbom (2001) who edited a Festschrift

in honor of his contributions to the eld of structural equation modeling.

Their volume contains chapters by scholars who address the many top-

ics, concerns, and applications in the eld of structural equation model-

ing today, including milestones in factor analysis; measurement models;

robustness, reliability, and t assessment; repeated measurement designs;

ordinal data; and interaction models. We cover many of these topics in

this book, although not in as great a depth. The eld of structural equa-

tion modeling across all disciplines has expanded since 1994. Hershberger

(2003) found that between 1994 and 2001 the number of journal articles

concerned with SEM increased, the number of journals publishing SEM

research increased, SEM became a popular choice amongst multivariate

methods, and the journal Structural Equation Modeling became the primary

source for technical developments in structural equation modeling.

1.3 Why Conduct Structural Equation Modeling?

Why is structural equation modeling popular? There are at least four

major reasons for the popularity of SEM. The rst reason suggests that

researchers are becoming more aware of the need to use multiple observed

Y102005.indb 6 3/22/10 3:24:45 PM

Introduction 7

variables to better understand their area of scientic inquiry. Basic statis-

tical methods only utilize a limited number of variables, which are not

capable of dealing with the sophisticated theories being developed. The

use of a small number of variables to understand complex phenomena is

limiting. For instance, the use of simple bivariate correlations is not suf-

cient for examining a sophisticated theoretical model. In contrast, struc-

tural equation modeling permits complex phenomena to be statistically

modeled and tested. SEM techniques are therefore becoming the preferred

method for conrming (or disconrming) theoretical models in a quanti-

tative fashion.

A second reason involves the greater recognition given to the valid-

ity and reliability of observed scores from measurement instruments.

Specically, measurement error has become a major issue in many dis-

ciplines, but measurement error and statistical analysis of data have

been treated separately. Structural equation modeling techniques explic-

itly take measurement error into account when statistically analyzing

data. As noted in subsequent chapters, SEM analysis includes latent and

observed variables as well as measurement error terms in certain SEM

models.

A third reason pertains to how structural equation modeling has matured

over the past 30 years, especially the ability to analyze more advanced the-

oretical SEM models. For example, group differences in theoretical models

can be assessed through multiple-group SEM models. In addition, analyz-

ing educational data collected at more than one level—for example, school

districts, schools, and teachers with student data—is now possible using

multilevel SEM modeling. As a nal example, interaction terms can now

be included in an SEM model so that main effects and interaction effects

can be tested. These advanced SEM models and techniques have provided

many researchers with an increased capability to analyze sophisticated

theoretical models of complex phenomena, thus requiring less reliance on

basic statistical methods.

Finally, SEM software programs have become increasingly user-

friendly. For example, until 1993 LISREL users had to input the pro-

gram syntax for their models using Greek and matrix notation. At

that time, many researchers sought help because of the complex pro-

gramming requirement and knowledge of the SEM syntax that was

required. Today, most SEM software programs are Windows-based

and use pull-down menus or drawing programs to generate the pro-

gram syntax internally. Therefore, the SEM software programs are now

easier to use and contain features similar to other Windows-based

software packages. However, such ease of use necessitates statisti-

cal training in SEM modeling and software via courses, workshops,

or textbooks to avoid mistakes and errors in analyzing sophisticated

theoretical models.

Y102005.indb 7 3/22/10 3:24:45 PM

8 A Beginner’s Guide to Structural Equation Modeling

1.4 Structural Equation Modeling Software Programs

Although the LISREL program was the rst SEM software program,

other software programs have subsequently been developed since the

mid-1980s. Some of the other programs include AMOS, EQS, Mx, Mplus,

Ramona, and Sepath, to name a few. These software programs are each

unique in their own way, with some offering specialized features for

conducting different SEM applications. Many of these SEM software

programs provide statistical analysis of raw data (e.g., means, correla-

tions, missing data conventions), provide routines for handling missing

data and detecting outliers, generate the program’s syntax, diagram the

model, and provide for import and export of data and gures of a theo-

retical model. Also, many of the programs come with sets of data and

program examples that are clearly explained in their user guides. Many

of these software programs have been reviewed in the journal Structural

Equation Modeling.

The pricing information for SEM software varies depending on indi-

vidual, group, or site license arrangements; corporate versus educa-

tional settings; and even whether one is a student or faculty member.

Furthermore, newer versions and updates necessitate changes in pric-

ing. Most programs will run in the Windows environment; some run

on MacIntosh personal computers. We are often asked to recommend

a software package to a beginning SEM researcher; however, given the

different individual needs of researchers and the multitude of different

features available in these programs, we are not able to make such a rec-

ommendation. Ultimately the decision depends upon the researcher’s

needs and preferences. Consequently, with so many software packages,

we felt it important to narrow our examples in the book to LISREL–

SIMPLIS programs.

We will therefore be using the LISREL 8.8 student version in the book

to demonstrate the many different SEM applications, including regres-

sion models, path models, conrmatory factor models, and the various

SEM models in chapters 13 through 16. The free student version of the

LISREL software program (Windows, Mac, and Linux editions) can be

downloaded from the website: http://www.ssicentral.com/lisrel/student.

html. (Note: The LISREL 8.8 Student Examples folder is placed in the main

directory C:/ of your computer, not the LISREL folder under C:/Program

Files when installing the software.)

Y102005.indb 8 3/22/10 3:24:45 PM

Introduction 9

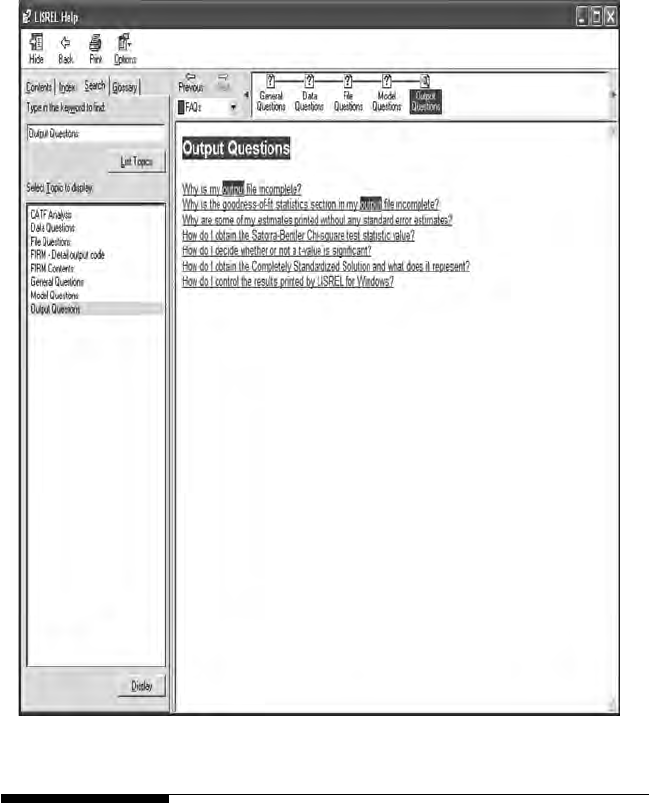

Once the LISREL software is downloaded, place an icon on your desk-

top by creating a shortcut to the LISREL icon. The LISREL icon should

look something like this:

LISREL 8.80 Student.lnk

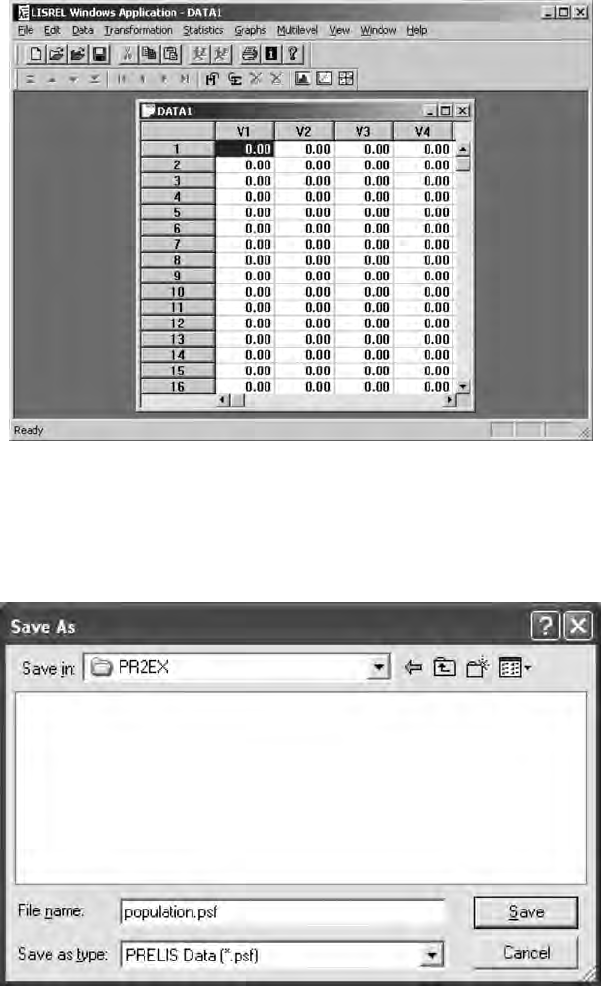

When you click on the icon, an empty dialog box will appear that should

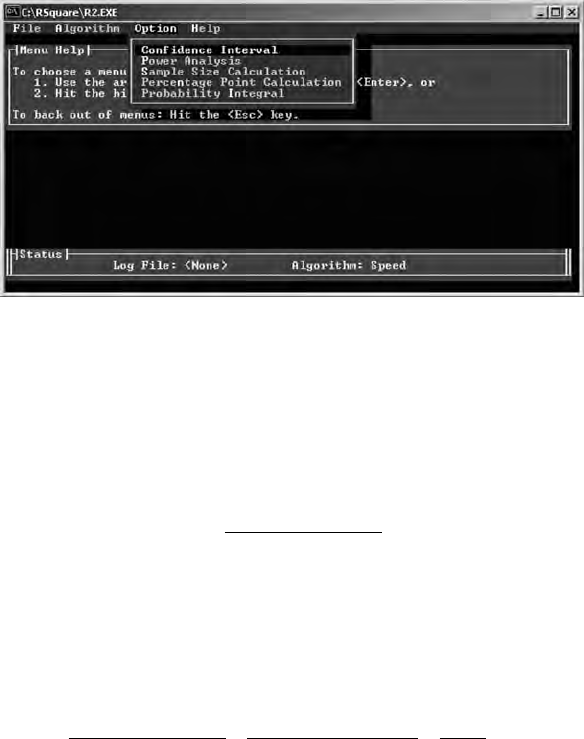

look like this:

NOTE: Nothing appears until you open a program le or data set using

the File or open folder icon; more about this in the next chapter.

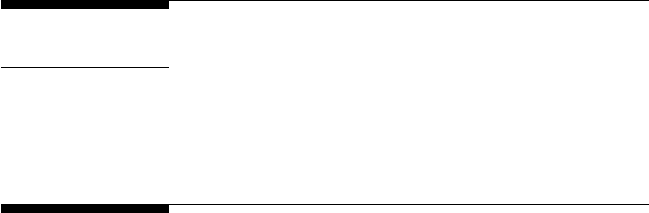

We do want to mention the very useful HELP menu. Click on the ques-

tion mark (?), a HELP menu will appear, then enter Output Questions in

the search window to nd answers to key questions you may have when

going over examples in the Third Edition.

Y102005.indb 9 3/22/10 3:25:10 PM

10 A Beginner’s Guide to Structural Equation Modeling

1 . 5 S u m m a r y

In this chapter we introduced structural equation modeling by describ-

ing basic types of variables—that is, latent, observed, independent, and

dependent—and basic types of SEM models—that is, regression, path,

conrmatory factor, and structural equation models. In addition, a brief

history of structural equation modeling was provided, followed by a dis-

cussion of the importance of SEM. This chapter concluded with a brief

listing of the different structural equation modeling software programs

and where to obtain the LISREL 8.8 student version for use with examples

Y102005.indb 10 3/22/10 3:25:11 PM

Introduction 11

in the book, including what the dialog box will rst appear like and a very

useful HELP menu.

In chapter 2 we consider the importance of examining data for issues

related to measurement level (nominal, ordinal, interval, or ratio), restric-

tion of range (fewer than 15 categories), missing data, outliers (extreme

values), linearity or nonlinearity, and normality or nonnormality, all of

which can affect statistical methods, and especially SEM applications.

Exercises

1. Dene the following terms:

a. Latent variable

b. Observed variable

c. Dependent variable

d. Independent variable

2. Explain the difference between a dependent latent variable and

a dependent observed variable.

3. Explain the difference between an independent latent variable

and an independent observed variable.

4. List the reasons why a researcher would conduct structural

equation modeling.

5. Download and activate the student version of LISREL: http://

www.ssicentral.com

6. Open and import SPSS or data le.

References

Anderson, T. W., & Rubin, H. (1956). Statistical inference in factor analysis. In

J. Neyman (Ed.), Proceedings of the third Berkeley symposium on mathemati-

cal statistics and probability, Vol. V (pp. 111–150). Berkeley: University of

California Press.

Cudeck, R., Du Toit, S., & Sörbom, D. (2001) (Eds). Structural equation modeling:

Present and future. A Festschrift in honor of Karl Jöreskog. Lincolnwood, IL:

Scientic Software International.

Delucchi, M. (2006). The efcacy of collaborative learning groups in an under-

graduate statistics course. College Teaching, 54, 244–248.

Goldberg, L. (1990). An alternative “description of personality”: Big Five factor

structure. Journal of Personality and Social Psychology, 59, 1216–1229.

Hershberger, S. L. (2003). The growth of structural equation modeling: 1994–2001.

Structural Equation Modeling, 10(1), 35–46.

Howe, W. G. (1955). Some contributions to factor analysis (Report No. ORNL-1919).

Oak Ridge National Laboratory, Oak Ridge, Tennessee.

Jöreskog, K. G. (1963). Statistical estimation in factor analysis: A new technique and its

foundation. Stockholm: Almqvist & Wiksell.

Y102005.indb 11 3/22/10 3:25:11 PM

12 A Beginner’s Guide to Structural Equation Modeling

Jöreskog, K. G. (1969). A general approach to conrmatory maximum likelihood

factor analysis. Psychometrika, 34, 183–202.

Jöreskog, K. G. (1973). A general method for estimating a linear structural equation

system. In A. S. Goldberger & O. D. Duncan (Eds.), Structural equation models

in the social sciences (pp. 85–112). New York: Seminar.

Keesling, J. W. (1972). Maximum likelihood approaches to causal ow analysis.

Unpublished doctoral dissertation. Chicago: University of Chicago.

Lawley, D. N. (1958). Estimation in factor analysis under various initial assump-

tions. British Journal of Statistical Psychology, 11, 1–12.

Parkerson, J. A., Lomax, R. G., Schiller, D. P., & Walberg, H. J. (1984). Exploring

causal models of educational achievement. Journal of Educational Psychology,

76, 638–646.

Pearson, E. S. (1938). Karl Pearson. An appreciation of some aspects of his life and work.

Cambridge: Cambridge University Press.

Shumow, L., & Lomax, R. G. (2002). Parental efcacy: Predictor of parenting behav-

ior and adolescent outcomes. Parenting: Science and Practice, 2, 127–150.

Spearman, C. (1904). The proof and measurement of association between two

things. American Journal of Psychology, 15, 72–101.

Spearman, C. (1927). The abilities of man. New York: Macmillan.

Wiley, D. E. (1973). The identication problem for structural equation models with

unmeasured variables. In A. S. Goldberger & O. D. Duncan (Eds.), Structural

equation models in the social sciences (pp. 69–83). New York: Seminar.

Wright, S. (1918). On the nature of size factors. Genetics, 3, 367–374.

Wright, S. (1921). Correlation and causation. Journal of Agricultural Research, 20,

557–585.

Wright, S. (1934). The method of path coefcients. Annals of Mathematical Statistics,

5, 161–215.

Y102005.indb 12 3/22/10 3:25:11 PM

13

2

Data Entry and Data Editing Issues

Key Concepts

Importing data le

System le

Measurement scale

Restriction of range

Missing data

Outliers

Linearity

Nonnormality

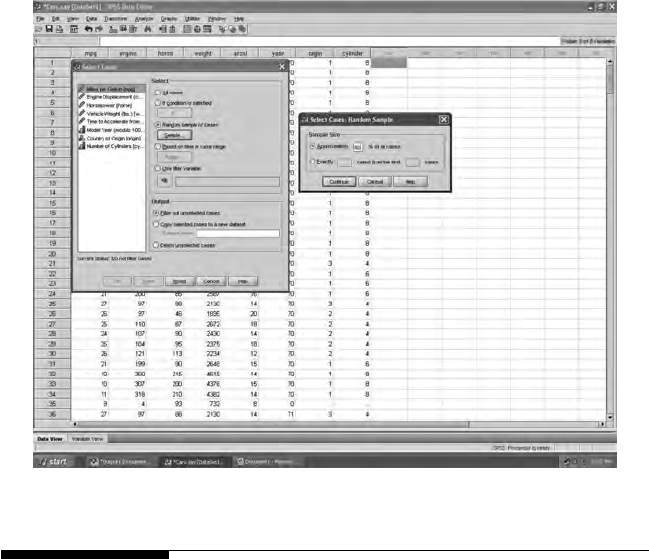

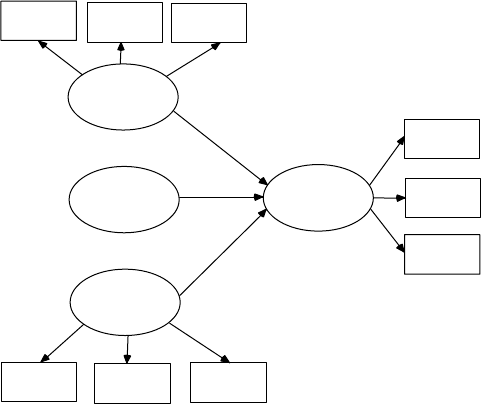

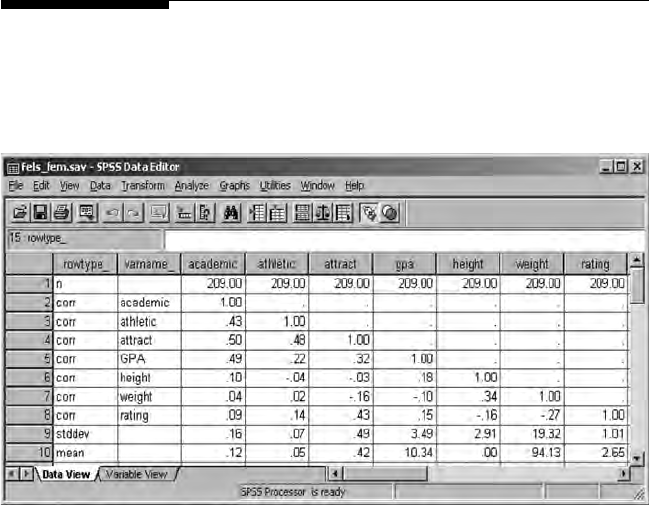

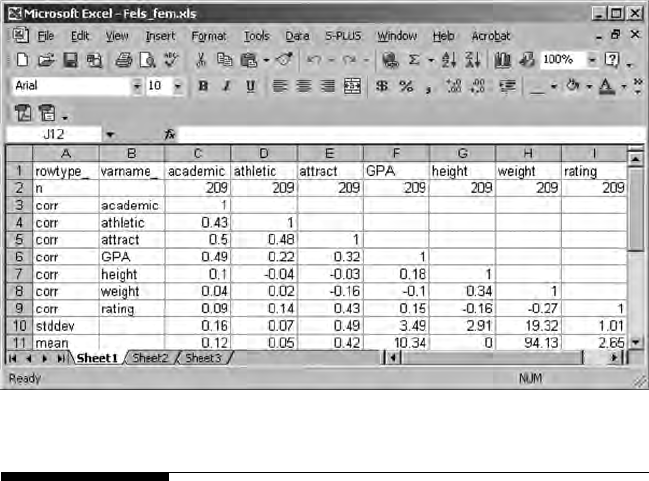

An important rst step in using LISREL is to be able to enter raw data and/

or import data, such as les from other programs (SPSS, SAS, EXCEL, etc.).

Other important steps involve being able to use LISREL–PRELIS to save

a system le, as well as output and save les that contain the variance–

covariance matrix, the correlation matrix, means, and standard deviations

of variables so they can be input into command syntax programs. The

LISREL–PRELIS program will be briey explained in this chapter to dem-

onstrate how it handles raw data entry, importing of data, and the output

of saved les.

There are several key issues in the eld of statistics that impact our anal-

yses once data have been imported into a software program. These data

issues are commonly referred to as the measurement scale of variables,

restriction in the range of data, missing data values, outliers, linearity, and

nonnormality. Each of these data issues will be discussed because they

not only affect traditional statistics, but present additional problems and

concerns in structural equation modeling.

We use LISREL software throughout the book, so you will need to use

that software and become familiar with their Web site. You should have

downloaded by now the free student version of the LISREL software.

Y102005.indb 13 3/22/10 3:25:11 PM

14 A Beginner’s Guide to Structural Equation Modeling

We use some of the data and model examples available in the free stu-

dent version to illustrate SEM applications. (Note: The LISREL 8.8 Student

Examples folder is placed in the main directory C:/ of your computer.)

The free student version of the software has a user guide, help functions,

and tutorials. The Web site also contains important research, documenta-

tion, and information about structural equation modeling. However, be

aware that the free student version of the software does not contain the

full capabilities available in their full licensed version (e.g., restricted to

15 observed variables in SEM analyses). These limitations are spelled out

on their Web site.

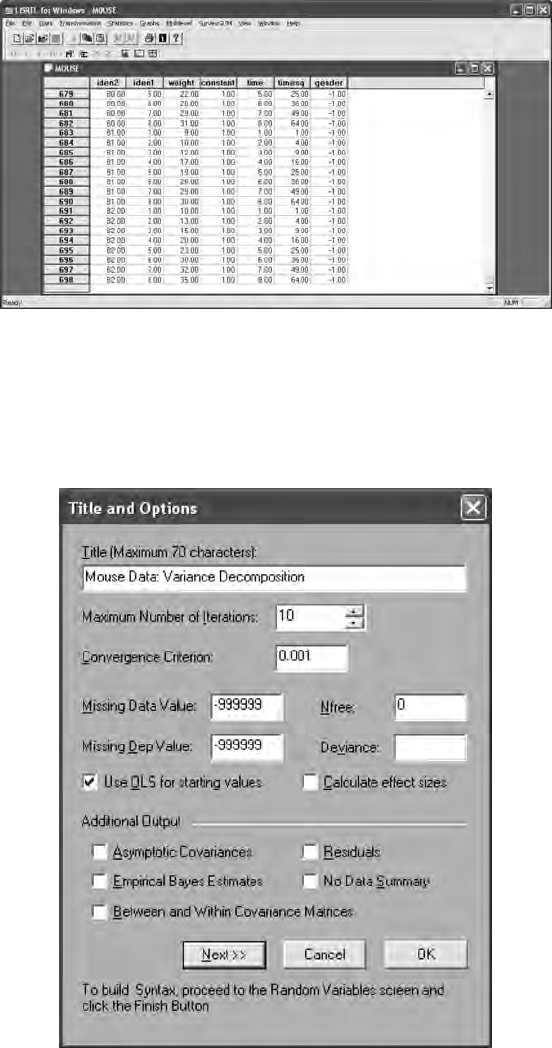

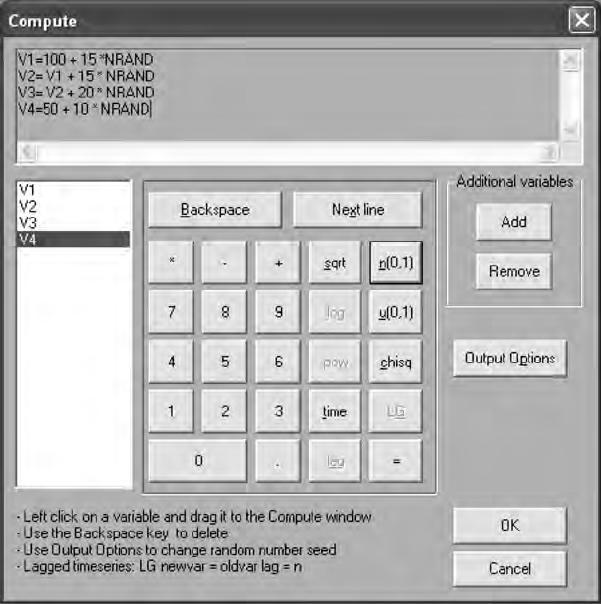

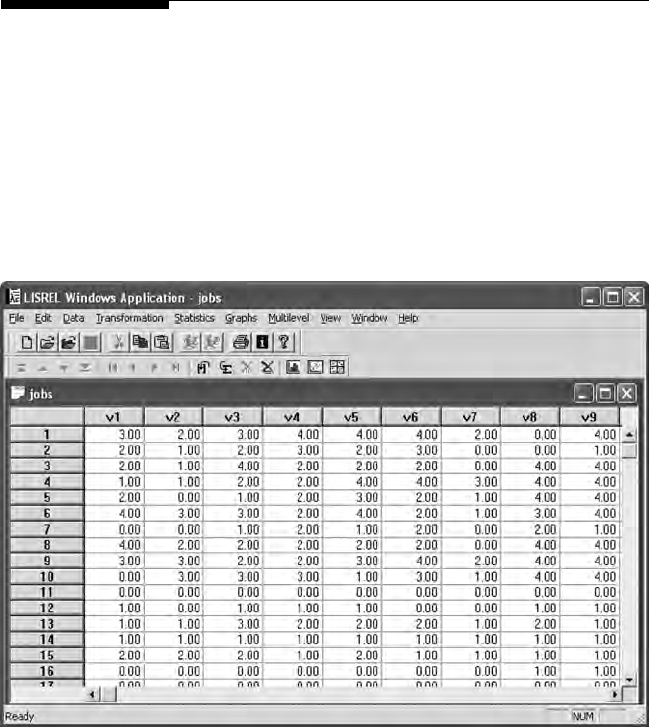

2.1 Data Entry

The LISREL software program interfaces with PRELIS, a preprocessor of

data prior to running LISREL (matrix command language) or SIMPLIS

(easier-to-use variable name syntax) programs. The newer Interactive

LISREL uses a spreadsheet format for data with pull-down menu options.

LISREL offers several different options for inputting data and importing

les from numerous other programs. The New, Open, and Import Data

functions provide maximum exibility for inputting data.

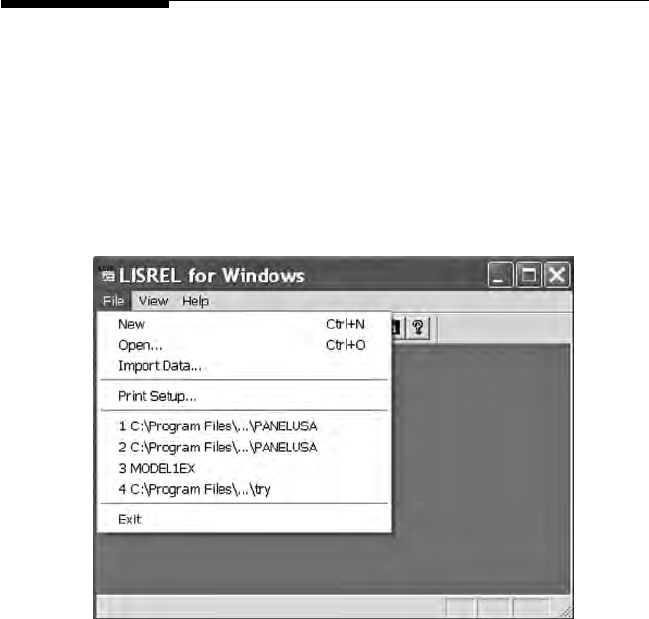

The New option permits the creation of a command syntax language

program (PRELIS, LISREL, or SIMPLIS) to read in a PRELIS data le, or

Y102005.indb 14 3/22/10 3:25:12 PM

Data Entry and Data Editing Issues 15

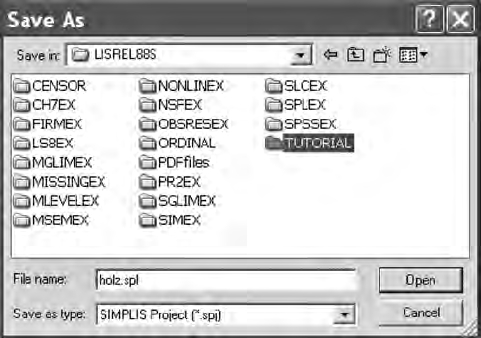

to open SIMPLIS and LISREL saved projects as well as a previously saved

Path Diagram.

The Open option permits you to browse and locate previously saved

PRELIS (.pr2), LISREL (.ls8), or SIMPLIS (.spl) programs; each with their

unique le extension. The student version has distinct folders containing

several program examples, for example LISREL (LS8EX folder), PRELIS

(PR2EX folder), and SIMPLIS (SPLEX folder).

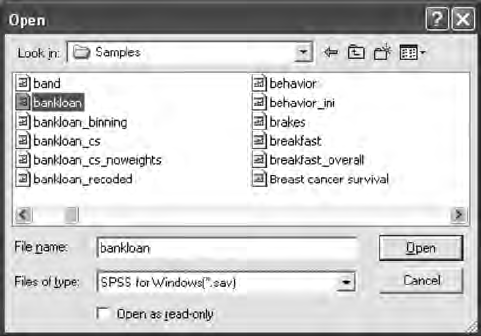

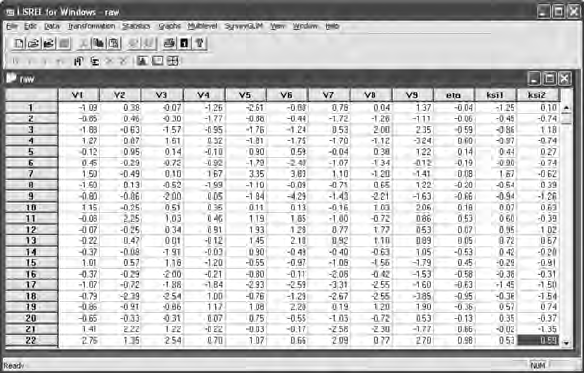

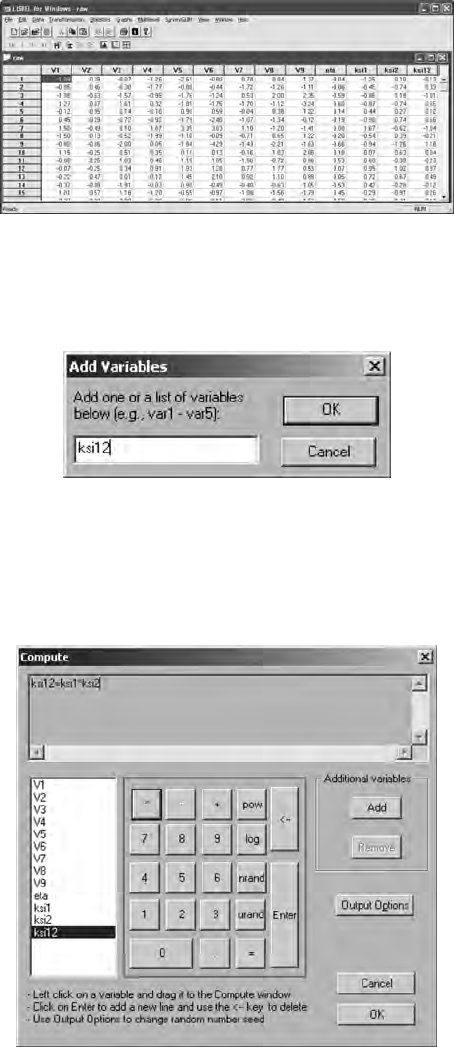

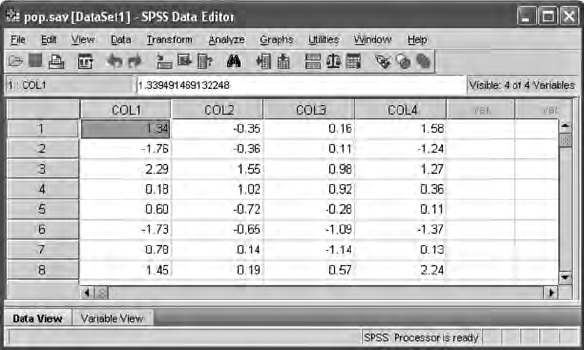

The Import Data option permits inputting raw data les or SPSS

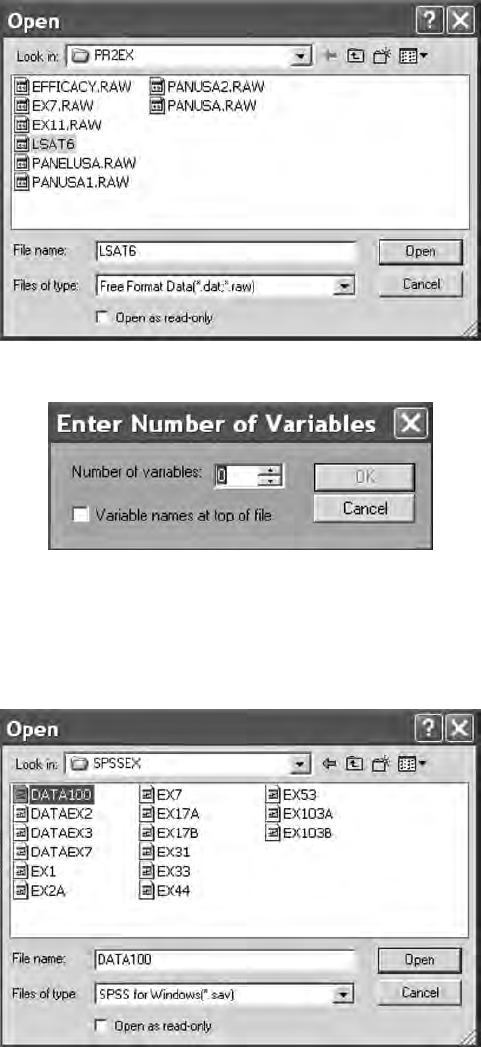

saved les. The raw data le, lsat6.dat, is in the PRELIS folder (PR2EX).

When selecting this le, you will need to know the number of variables

in the le.

Y102005.indb 15 3/22/10 3:25:12 PM

16 A Beginner’s Guide to Structural Equation Modeling

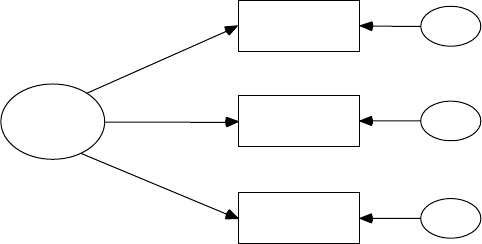

An SPSS saved le, data100.sav, is in the SPSS folder (SPSSEX). Once you

open this le, a PRELIS system le is created.

Y102005.indb 16 3/22/10 3:25:13 PM

Data Entry and Data Editing Issues 17

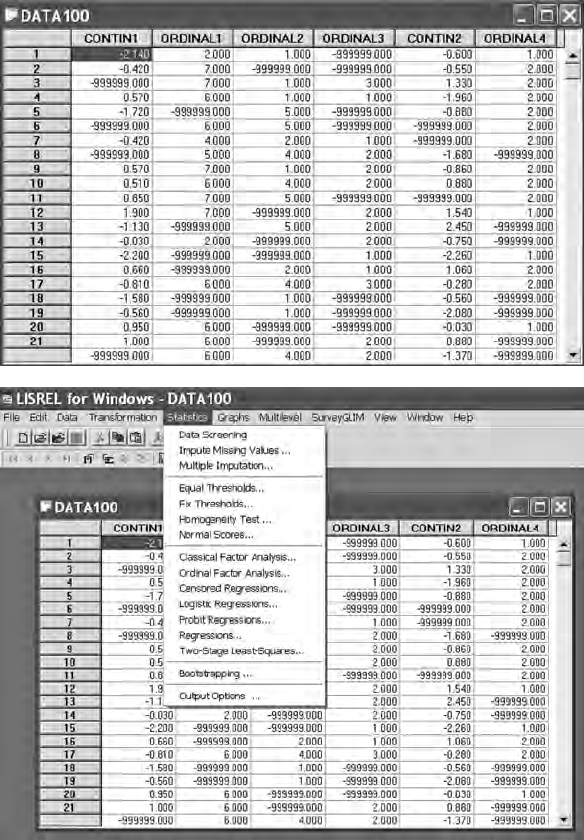

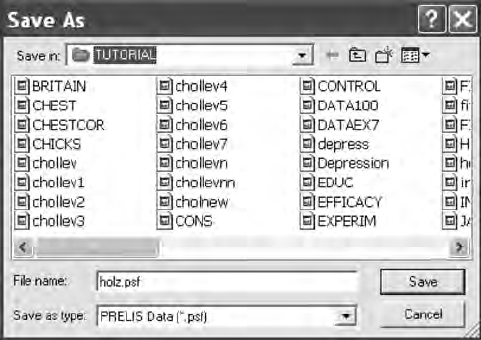

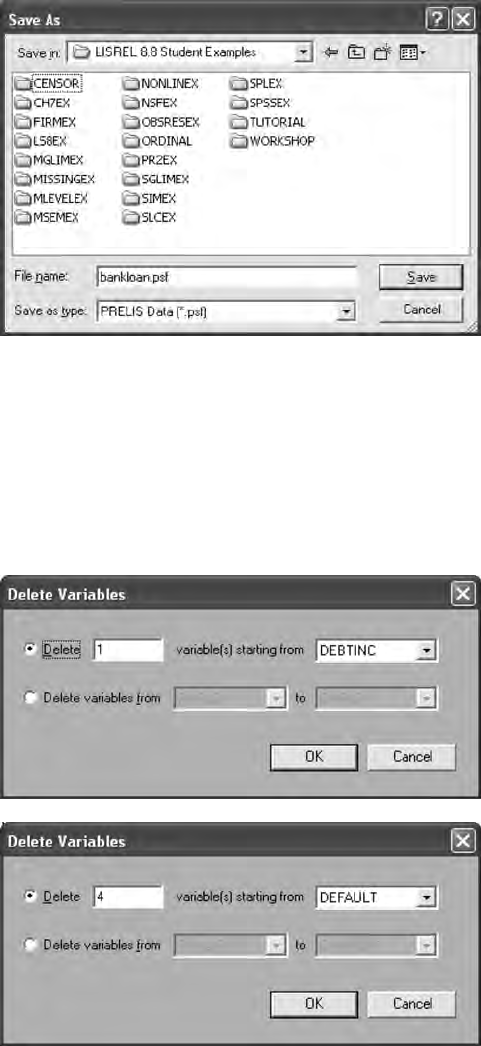

Once the PRELIS system le becomes active, then it needs to be saved for

future use. (Note: # symbol may appear if columns are to narrow; simply use

your mouse to expand the columns so that the missing values—999999.00

will appear. Also, if you right-mouse click on the variable names, a menu

appears to dene missing values, etc.). The PRELIS system le (.psf) acti-

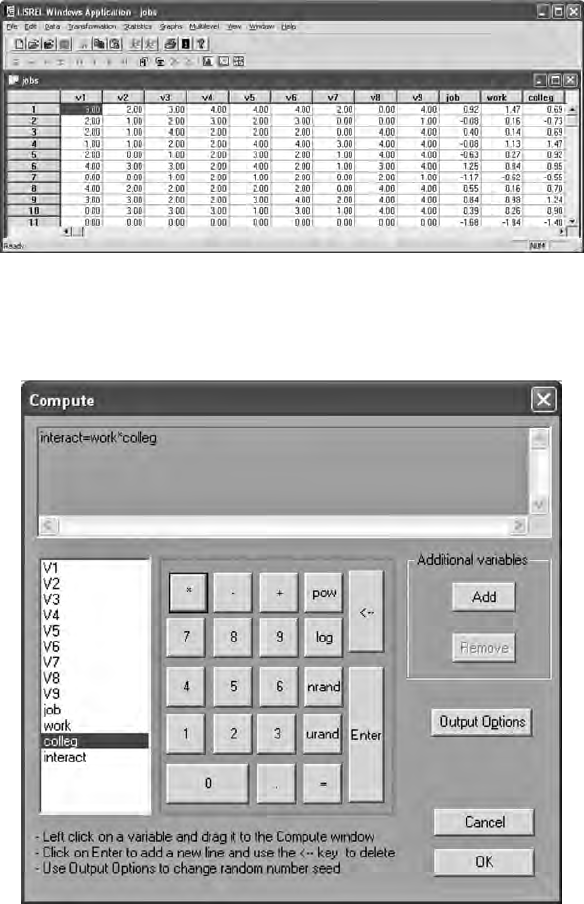

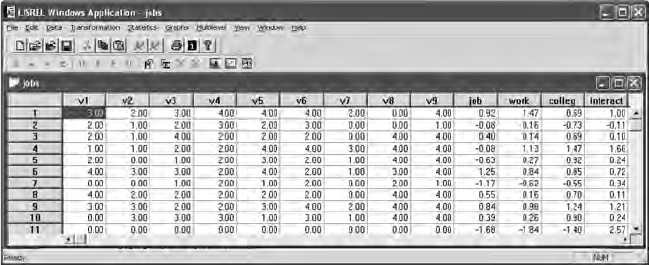

vates a pull-down menu that permits data editing features, data transfor-

mations, statistical analysis of data, graphical display of data, multilevel

modeling, and many other related features.

Y102005.indb 17 3/22/10 3:25:16 PM

18 A Beginner’s Guide to Structural Equation Modeling

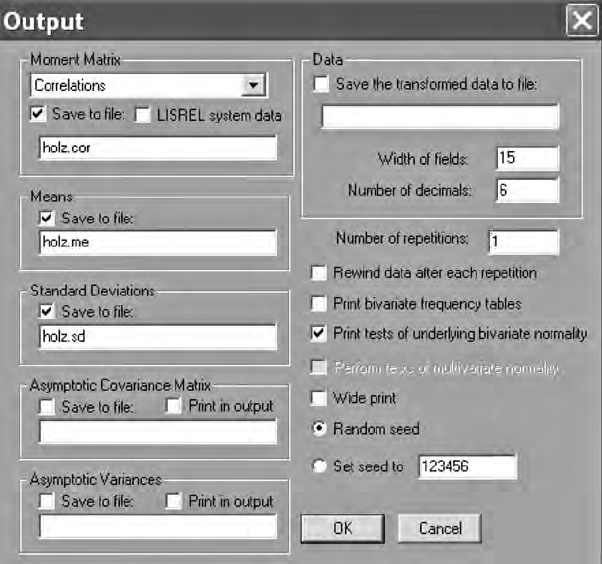

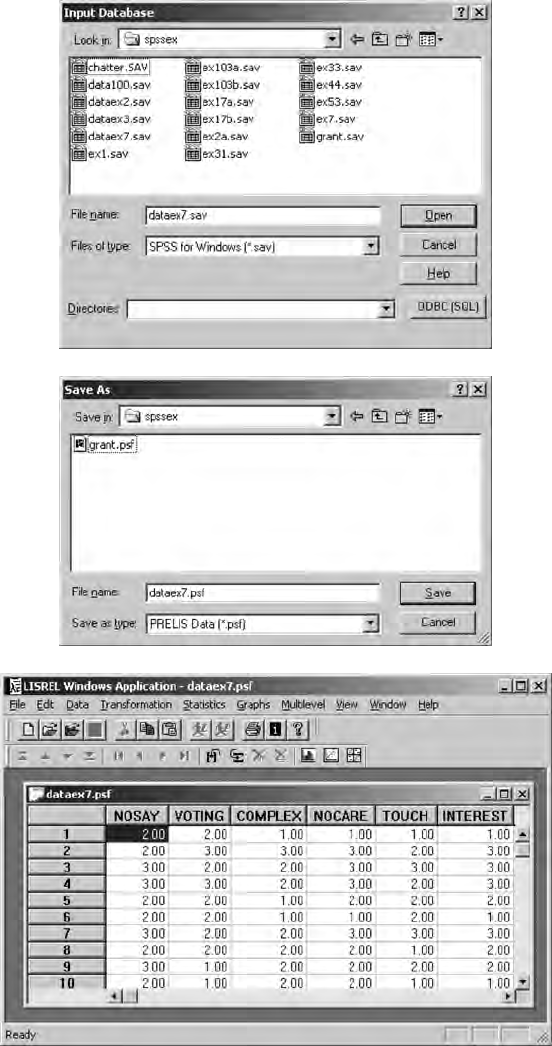

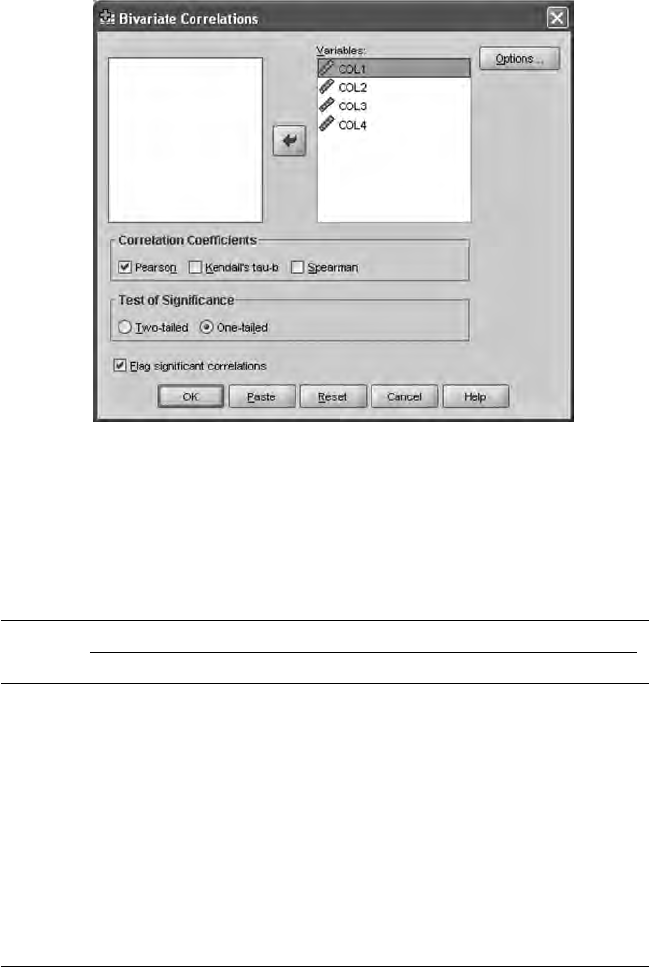

The statistical analysis of data includes factor analysis, probit regres-

sion, least squares regression, and two-stage least squares methods.

Other important data editing features include imputing missing values,

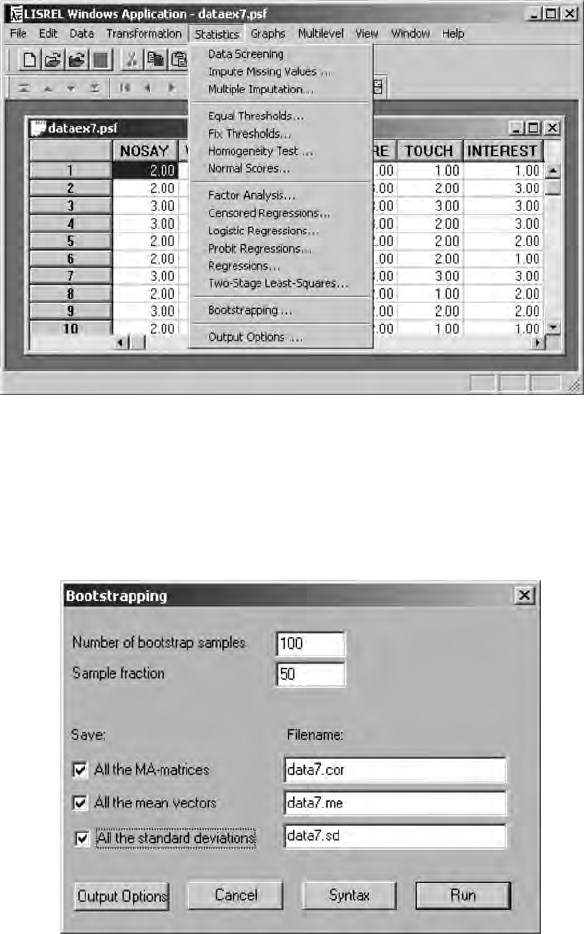

a homogeneity test, creation of normal scores, bootstrapping, and data

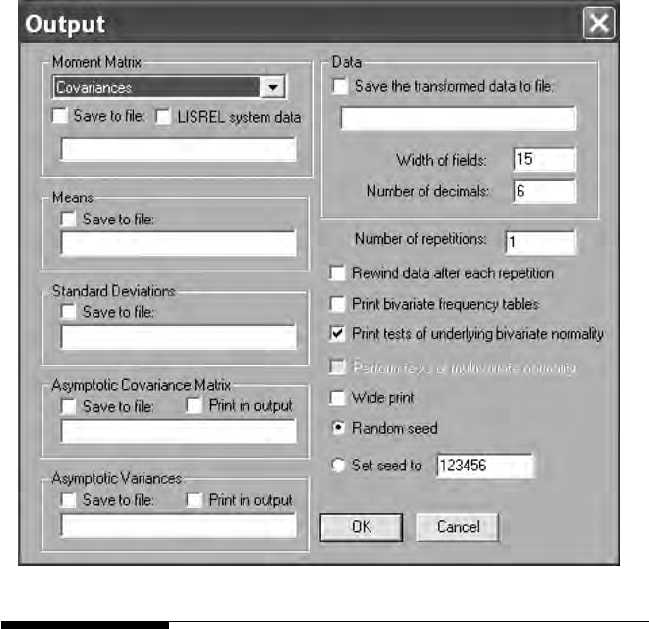

output options. The data output options permit saving different types of

variance–covariance matrices and descriptive statistics in les for use in

LISREL and SIMPLIS command syntax programs. This capability is very

important, especially when advanced SEM models are analyzed in chap-

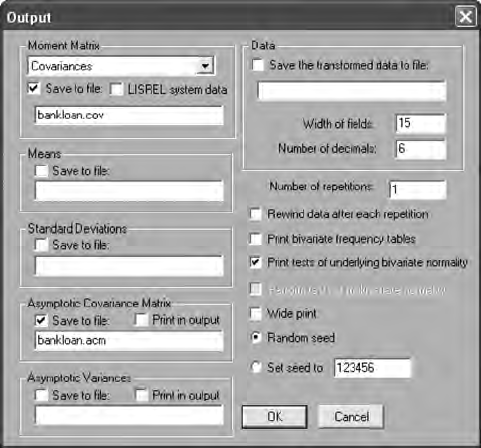

ters 13 to 16. We will demonstrate the use of this Output Options dialog

box in this chapter and in some of our other chapter examples.

2.2 Data Editing Issues

2.2.1 Measurement Scale

How variables are measured or scaled inuences the type of statistical

analyses we perform (Anderson, 1961; Stevens, 1946). Properties of scale

also guide our understanding of permissible mathematical operations.

Y102005.indb 18 3/22/10 3:25:17 PM

Data Entry and Data Editing Issues 19

For example, a nominal variable implies mutually exclusive groups; a

biological gender has two mutually exclusive groups, male and female.

An individual can only be in one of the groups that dene the levels

of the variable. In addition, it would not be meaningful to calculate a

mean and a standard deviation on the variable gender. Consequently,

the number or percentage of individuals at each level of the gender

variable is the only mathematical property of scale that makes sense.

An ordinal variable, for example, attitude toward school, that is scaled

strongly agree, agree, neutral, disagree, and strongly disagree, implies mutu-

ally exclusive categories that are ordered or ranked. When levels of a

variable have properties of scale that involve mutually exclusive groups

that are ordered, only certain mathematical operations are meaning-

ful, for example, a comparison of ranks between groups. SEM nal

exam scores, an example of an interval variable, possesses the property

of scale, implying equal intervals between the data points, but no true

zero point. This property of scale permits the mathematical operation

of computing a mean and a standard deviation. Similarly, a ratio vari-

able, for example, weight, has the property of scale that implies equal

intervals and a true zero point (weightlessness). Therefore, ratio vari-

ables also permit mathematical operations of computing a mean and

a standard deviation. Our use of different variables requires us to be

aware of their properties of scale and what mathematical operations

are possible and meaningful, especially in SEM, where variance–

covariance (correlation) matrices are used with means and standard

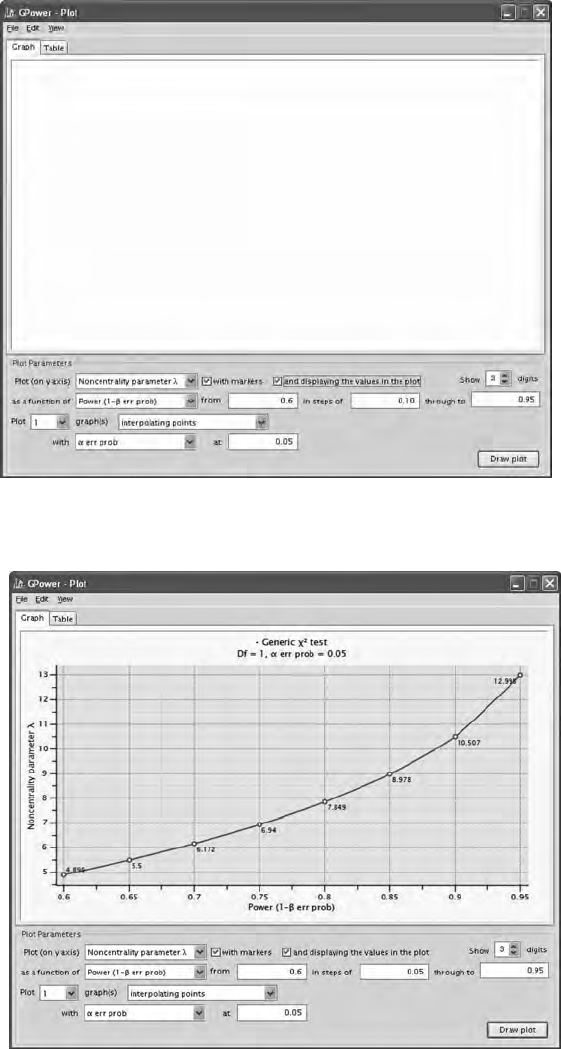

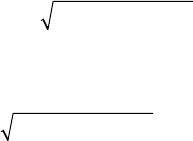

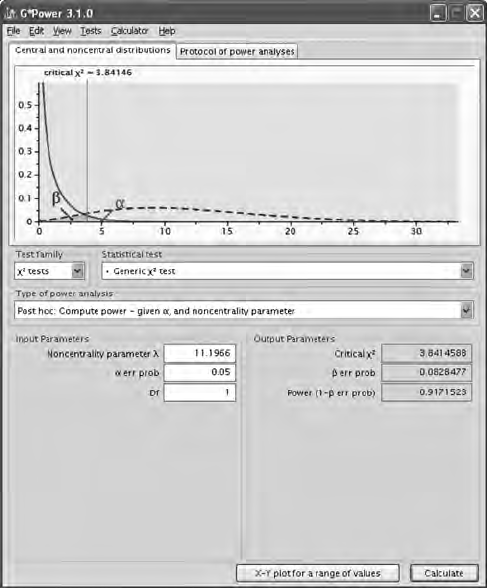

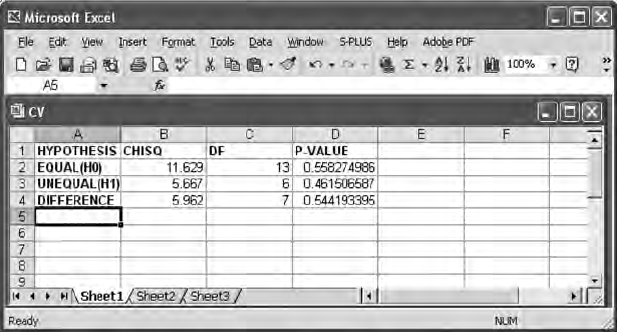

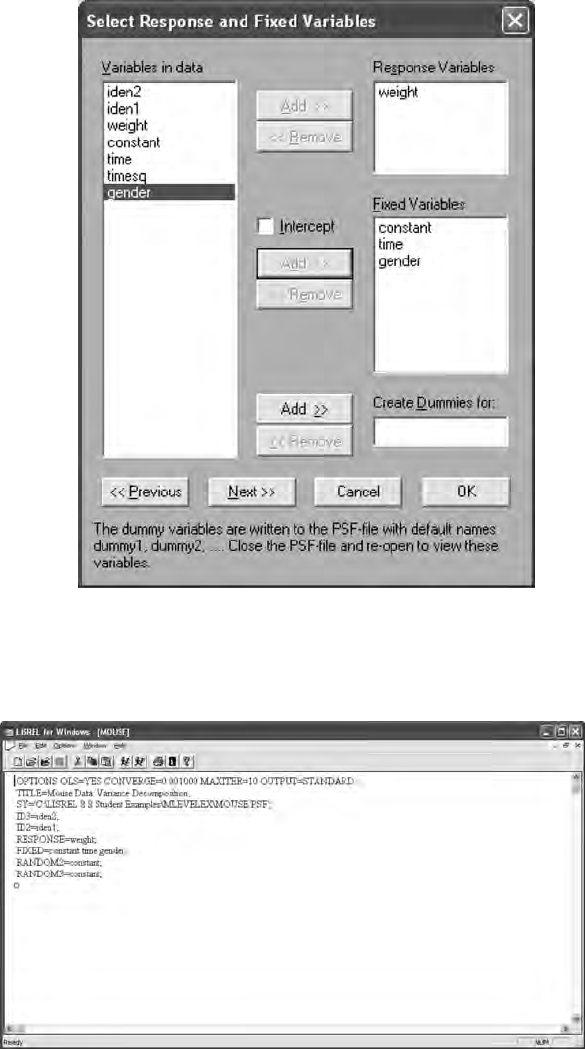

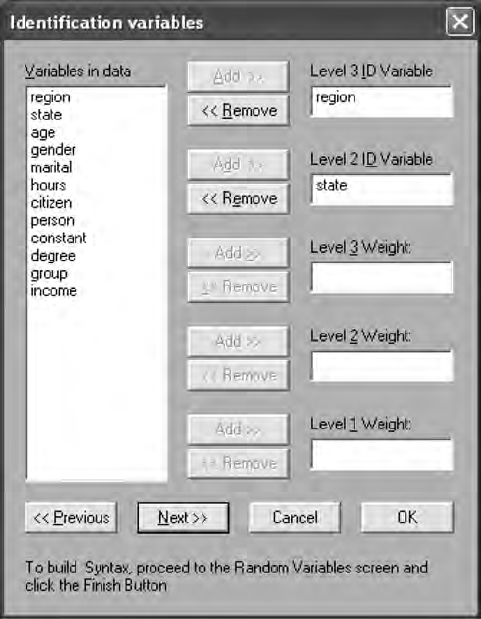

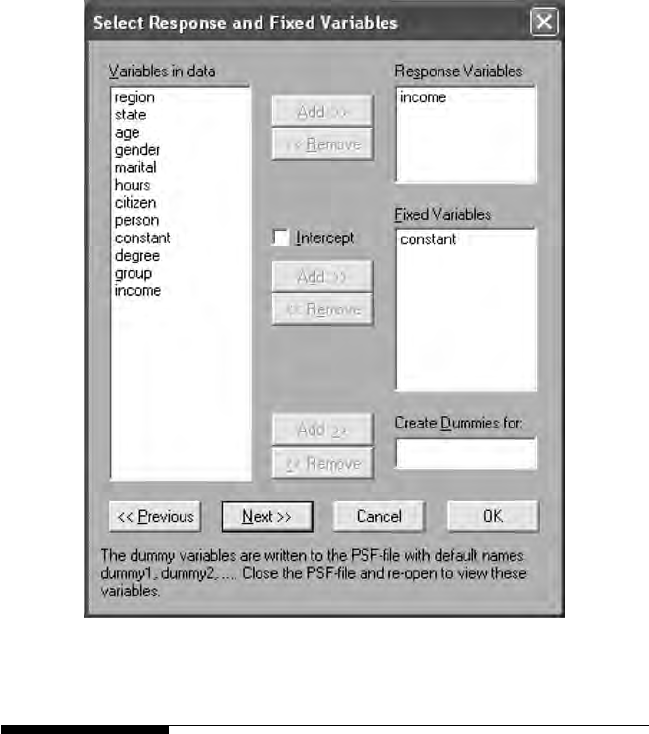

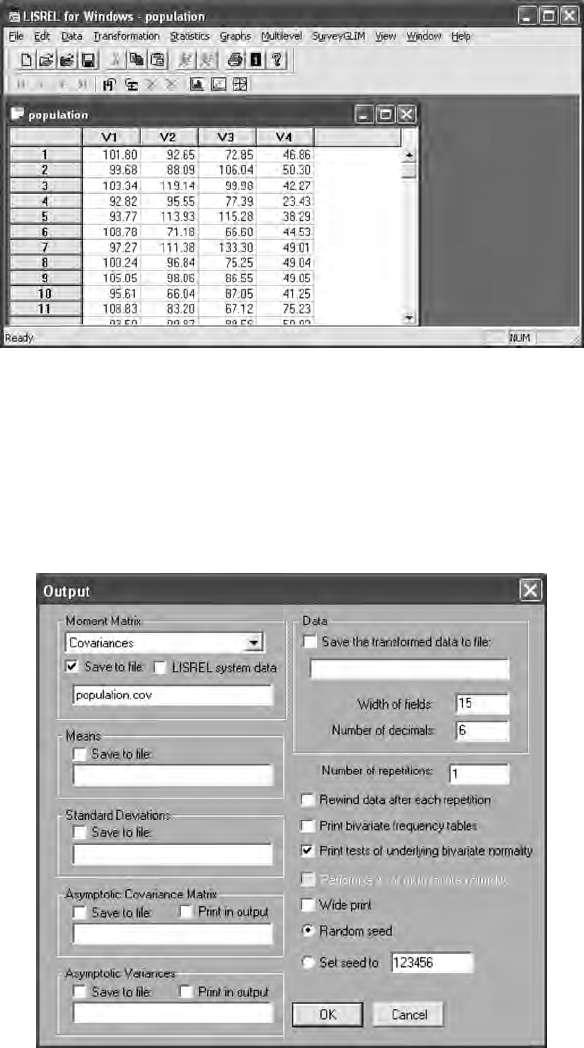

deviations of variables. Different correlations among variables are