Big Data SMACK A Guide To Apache Spark, Mesos, Akka, Cassandra, And Kafka

Big%20Data%20SMACK%20A%20Guide%20to%20Apache%20Spark%2C%20Mesos%2C%20Akka%2C%20Cassandra%2C%20and%20Kafka

Big%20Data%20SMACK%20A%20Guide%20to%20Apache%20Spark%2C%20Mesos%2C%20Akka%2C%20Cassandra%2C%20and%20Kafka

Big%20Data%20SMACK%20-%20A%20Guide%20to%20Apache%20Spark%2C%20Mesos%2C%20Akka%2C%20Cassandra%2C%20and%20Kafka

Big%20Data%20SMACK%20A%20Guide%20to%20Apache%20Spark%2C%20Mesos%2C%20Akka%2C%20Cassandra%2C%20and%20Kafka

Big%20Data%20SMACK%20A%20Guide%20to%20Apache%20Spark%2C%20Mesos%2C%20Akka%2C%20Cassandra%2C%20and%20Kafka

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 277 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents at a Glance

- Contents

- About the Authors

- About the Technical Reviewer

- Acknowledgments

- Introduction

- Part I: Introduction

- Part II: Playing SMACK

- Chapter 3: The Language: Scala

- Chapter 4: The Model: Akka

- Chapter 5: Storage: Apache Cassandra

- Chapter 6: The Engine: Apache Spark

- Chapter 7: The Manager: Apache Mesos

- Chapter 8: The Broker: Apache Kafka

- Kafka Introduction

- Kafka Installation

- Kafka in Cluster

- Kafka Architecture

- Kafka Producers

- Kafka Consumers

- Kafka Integration

- Kafka Administration

- Summary

- Part III: Improving SMACK

- Chapter 9: Fast Data Patterns

- Fast Data

- ACID vs. CAP

- Integrating Streaming and Transactions

- Streaming Transformations

- Fault Recovery Strategies

- Tag Data Identifiers

- Summary

- Chapter 10: Data Pipelines

- Data Pipeline Strategies and Principles

- Asynchronous Message Passing

- Consensus and Gossip

- Data Locality

- Failure Detection

- Fault Tolerance/No Single Point of Failure

- Isolation

- Location Transparency

- Parallelism

- Partition for Scale

- Replay for Any Point of Failure

- Replicate for Resiliency

- Scalable Infrastructure

- Share Nothing/Masterless

- Dynamo Systems Principles

- Spark and Cassandra

- Akka and Kafka

- Akka and Cassandra

- Akka and Spark

- Kafka and Cassandra

- Summary

- Data Pipeline Strategies and Principles

- Chapter 11: Glossary

- ACID

- agent

- API

- BI

- big data

- CAP

- CEP

- client-server

- cloud

- cluster

- column family

- coordinator

- CQL

- CQLS

- concurrency

- commutative operations

- CRDTs

- dashboard

- data feed

- DBMS

- determinism

- dimension data

- distributed computing.

- driver

- ETL

- exabyte

- exponential backoff

- failover

- fast data

- gossip

- graph database

- HDSF

- HTAP

- IaaS

- idempotence

- IMDG

- IoT

- key-value

- keyspace

- latency

- master-slave

- metadata

- NoSQL

- operational analytics

- RDBMS

- real-time analytics

- replication

- PaaS

- probabilistic data structures

- SaaS

- scalability

- shared nothing

- Spark-Cassandra Connector

- streaming analytics

- synchronization

- unstructured data

- Chapter 9: Fast Data Patterns

- Index

Big Data

SMACK

A Guide to Apache Spark, Mesos,

Akka, Cassandra, and Kaa

—

Raul Estrada

Isaac Ruiz

Big Data SMACK

A Guide to Apache Spark, Mesos, Akka,

Cassandra, and Kafka

Raul Estrada

Isaac Ruiz

Big Data SMACK: A Guide to Apache Spark, Mesos, Akka, Cassandra, and Kafka

Raul Estrada Isaac Ruiz

Mexico City Mexico City

Mexico Mexico

ISBN-13 (pbk): 978-1-4842-2174-7 ISBN-13 (electronic): 978-1-4842-2175-4

DOI 10.1007/978-1-4842-2175-4

Library of Congress Control Number: 2016954634

Copyright © 2016 by Raul Estrada and Isaac Ruiz

This work is subject to copyright. All rights are reserved by the Publisher, whether the whole or part of the

material is concerned, specifically the rights of translation, reprinting, reuse of illustrations, recitation,

broadcasting, reproduction on microfilms or in any other physical way, and transmission or information

storage and retrieval, electronic adaptation, computer software, or by similar or dissimilar methodology now

known or hereafter developed.

Trademarked names, logos, and images may appear in this book. Rather than use a trademark symbol with

every occurrence of a trademarked name, logo, or image we use the names, logos, and images only in an

editorial fashion and to the benefit of the trademark owner, with no intention of infringement of the trademark.

The use in this publication of trade names, trademarks, service marks, and similar terms, even if they are

not identified as such, is not to be taken as an expression of opinion as to whether or not they are subject to

proprietary rights.

While the advice and information in this book are believed to be true and accurate at the date of publication,

neither the authors nor the editors nor the publisher can accept any legal responsibility for any errors or

omissions that may be made. The publisher makes no warranty, express or implied, with respect to the

material contained herein.

Managing Director: Welmoed Spahr

Acquisitions Editor: Susan McDermott

Developmental Editor: Laura Berendson

Technical Reviewer: Rogelio Vizcaino

Editorial Board: Steve Anglin, Pramila Balen, Laura Berendson, Aaron Black, Louise Corrigan,

Jonathan Gennick, Robert Hutchinson, Celestin Suresh John, Nikhil Karkal, James Markham,

Susan McDermott, Matthew Moodie, Natalie Pao, Gwenan Spearing

Coordinating Editor: Rita Fernando

Copy Editor: Kim Burton-Weisman

Compositor: SPi Global

Indexer: SPi Global

Cover Image: Designed by Harryarts - Freepik.com

Distributed to the book trade worldwide by Springer Science+Business Media New York, 233 Spring Street,

6th Floor, New York, NY 10013. Phone 1-800-SPRINGER, fax (201) 348-4505, e-mail

orders-ny@springer-sbm.com ,

or visit

www.springer.com . Apress Media, LLC is a California LLC and the sole member (owner) is Springer

Science + Business Media Finance Inc (SSBM Finance Inc). SSBM Finance Inc is a Delaware corporation.

For information on translations, please e-mail

rights@apress.com , or visit www.apress.com .

Apress and friends of ED books may be purchased in bulk for academic, corporate, or promotional use.

eBook versions and licenses are also available for most titles. For more information, reference our Special

Bulk Sales–eBook Licensing web page at

www.apress.com/bulk-sales .

Any source code or other supplementary materials referenced by the author in this text is available to

readers at

www.apress.com . For detailed information about how to locate your book’s source code, go to

www.apress.com/source-code/ .

Printed on acid-free paper

I dedicate this book to my mom and all the masters out there.

—Raúl Estrada

For all Binnizá people.

—Isaac Ruiz

v

Contents at a Glance

About the Authors ...................................................................................................xix

About the Technical Reviewer ................................................................................xxi

Acknowledgments ................................................................................................xxiii

Introduction ...........................................................................................................xxv

■Part I: Introduction ................................................................................................ 1

■Chapter 1: Big Data, Big Challenges ...................................................................... 3

■Chapter 2: Big Data, Big Solutions ......................................................................... 9

■Part II: Playing SMACK ........................................................................................ 17

■Chapter 3: The Language: Scala .......................................................................... 19

■Chapter 4: The Model: Akka ................................................................................ 41

■Chapter 5: Storage: Apache Cassandra ............................................................... 67

■Chapter 6: The Engine: Apache Spark ................................................................. 97

■Chapter 7: The Manager: Apache Mesos ........................................................... 131

■Chapter 8: The Broker: Apache Kafka ................................................................ 165

■Part III: Improving SMACK ................................................................................. 205

■Chapter 9: Fast Data Patterns ............................................................................ 207

■Chapter 10: Data Pipelines ................................................................................ 225

■Chapter 11: Glossary ......................................................................................... 251

Index ..................................................................................................................... 259

vii

Contents

About the Authors ...................................................................................................xix

About the Technical Reviewer ................................................................................xxi

Acknowledgments ................................................................................................xxiii

Introduction ...........................................................................................................xxv

■Part I: Introduction ................................................................................................ 1

■Chapter 1: Big Data, Big Challenges ...................................................................... 3

Big Data Problems ............................................................................................................ 3

Infrastructure Needs ........................................................................................................ 3

ETL ................................................................................................................................... 4

Lambda Architecture ........................................................................................................ 5

Hadoop ............................................................................................................................. 5

Data Center Operation ...................................................................................................... 5

The Open Source Reign ..........................................................................................................................6

The Data Store Diversifi cation ................................................................................................................6

Is SMACK the Solution? .................................................................................................... 7

■Chapter 2: Big Data, Big Solutions ......................................................................... 9

Traditional vs. Modern (Big) Data ..................................................................................... 9

SMACK in a Nutshell ....................................................................................................... 11

Apache Spark vs. MapReduce ........................................................................................ 12

The Engine......................................................................................................................14

The Model ....................................................................................................................... 15

The Broker ...................................................................................................................... 15

■ CONTENTS

viii

The Storage ....................................................................................................................16

The Container ................................................................................................................. 16

Summary ........................................................................................................................16

■Part II: Playing SMACK ........................................................................................ 17

■Chapter 3: The Language: Scala .......................................................................... 19

Functional Programming ................................................................................................ 19

Predicate ..............................................................................................................................................19

Literal Functions ...................................................................................................................................20

Implicit Loops .......................................................................................................................................20

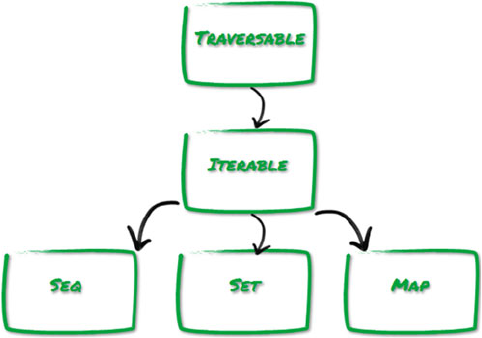

Collections Hierarchy ..................................................................................................... 21

Sequences ............................................................................................................................................21

Maps .....................................................................................................................................................22

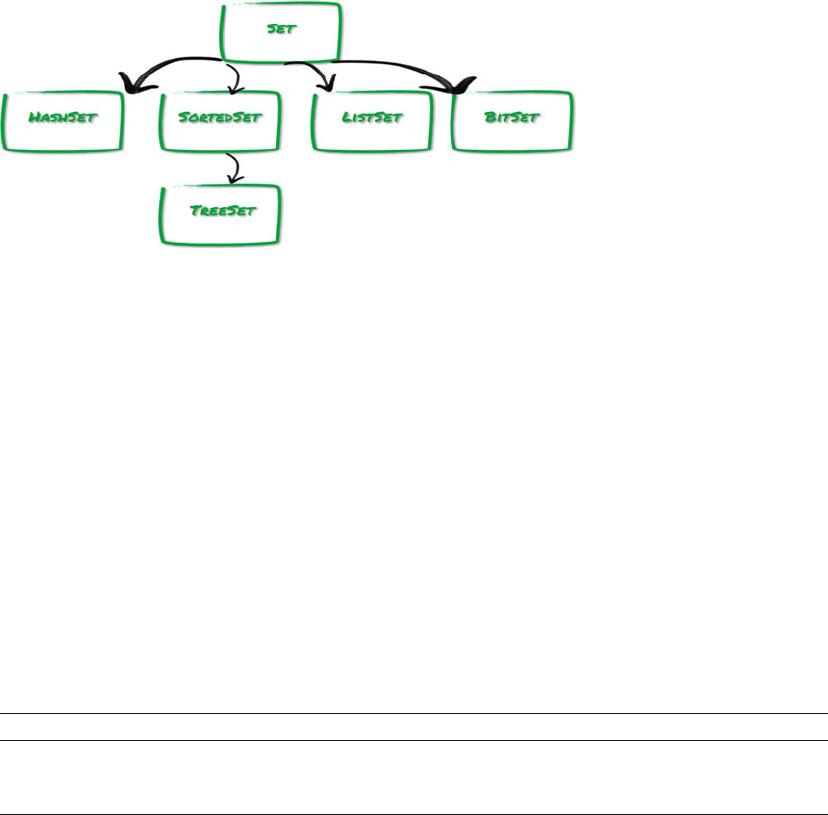

Sets.......................................................................................................................................................23

Choosing Collections ...................................................................................................... 23

Sequences ............................................................................................................................................23

Maps .....................................................................................................................................................24

Sets.......................................................................................................................................................25

Traversing ....................................................................................................................... 25

foreach .................................................................................................................................................25

for .........................................................................................................................................................26

Iterators ................................................................................................................................................27

Mapping ......................................................................................................................... 27

Flattening ....................................................................................................................... 28

Filtering .......................................................................................................................... 29

Extracting ....................................................................................................................... 30

Splitting .......................................................................................................................... 31

Unicity ............................................................................................................................ 32

Merging .......................................................................................................................... 32

Lazy Views ...................................................................................................................... 33

Sorting ............................................................................................................................ 34

■ CONTENTS

ix

Streams .......................................................................................................................... 35

Arrays ............................................................................................................................. 35

ArrayBuffers ...................................................................................................................36

Queues ........................................................................................................................... 37

Stacks ............................................................................................................................ 38

Ranges ........................................................................................................................... 39

Summary ........................................................................................................................40

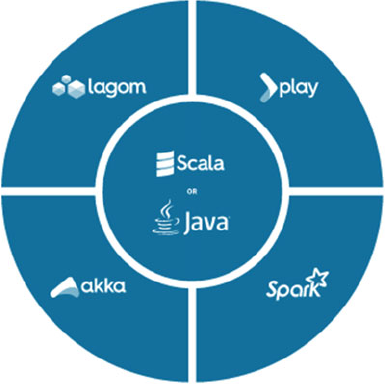

■Chapter 4: The Model: Akka ................................................................................ 41

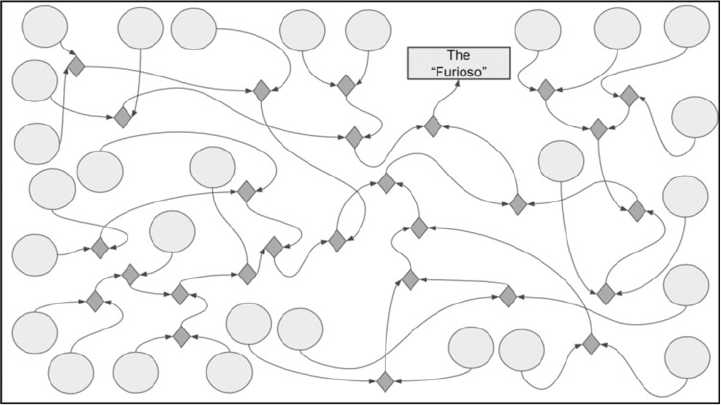

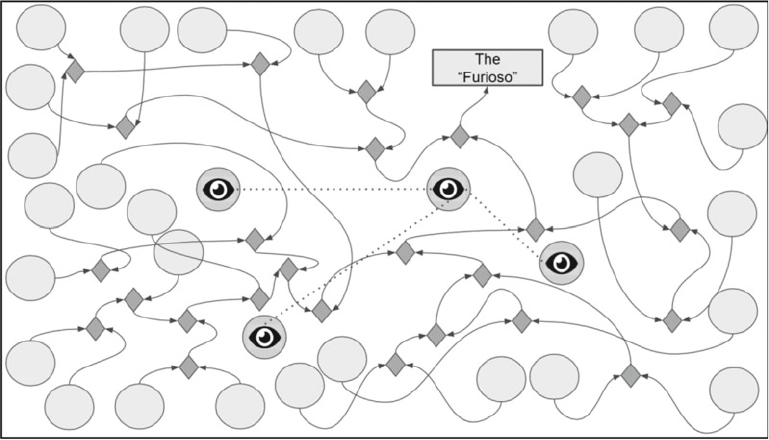

The Actor Model ............................................................................................................. 41

Threads and Labyrinths ........................................................................................................................42

Actors 101 ............................................................................................................................................42

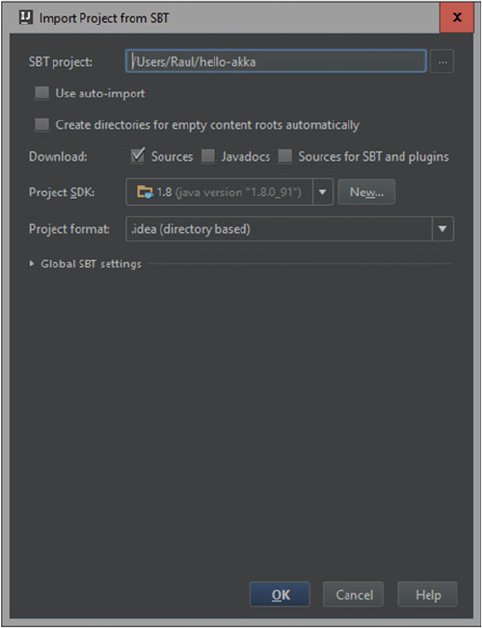

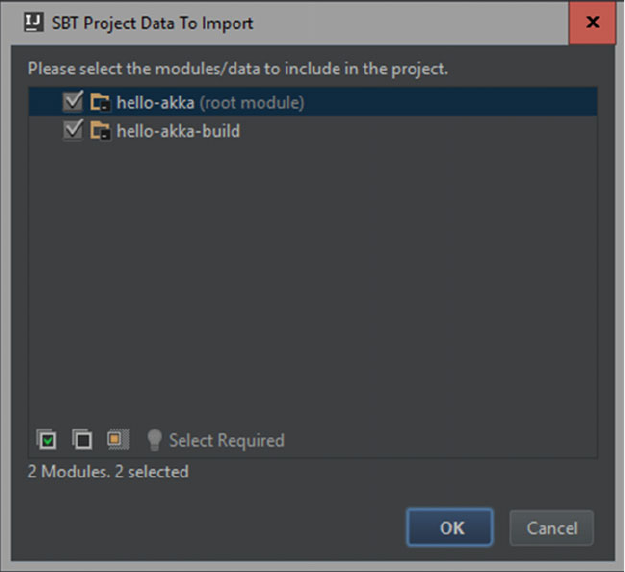

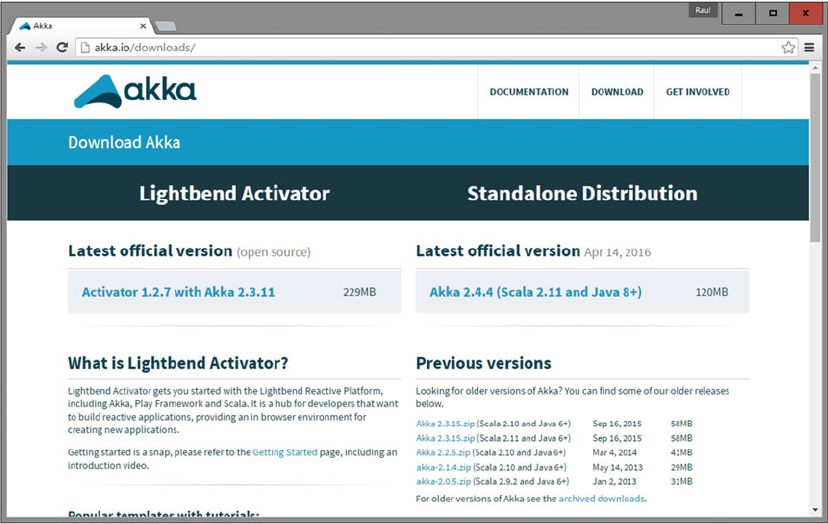

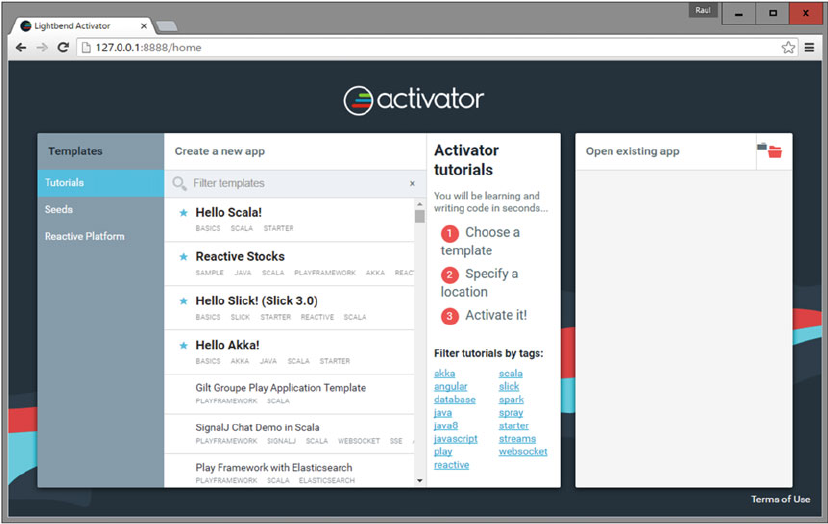

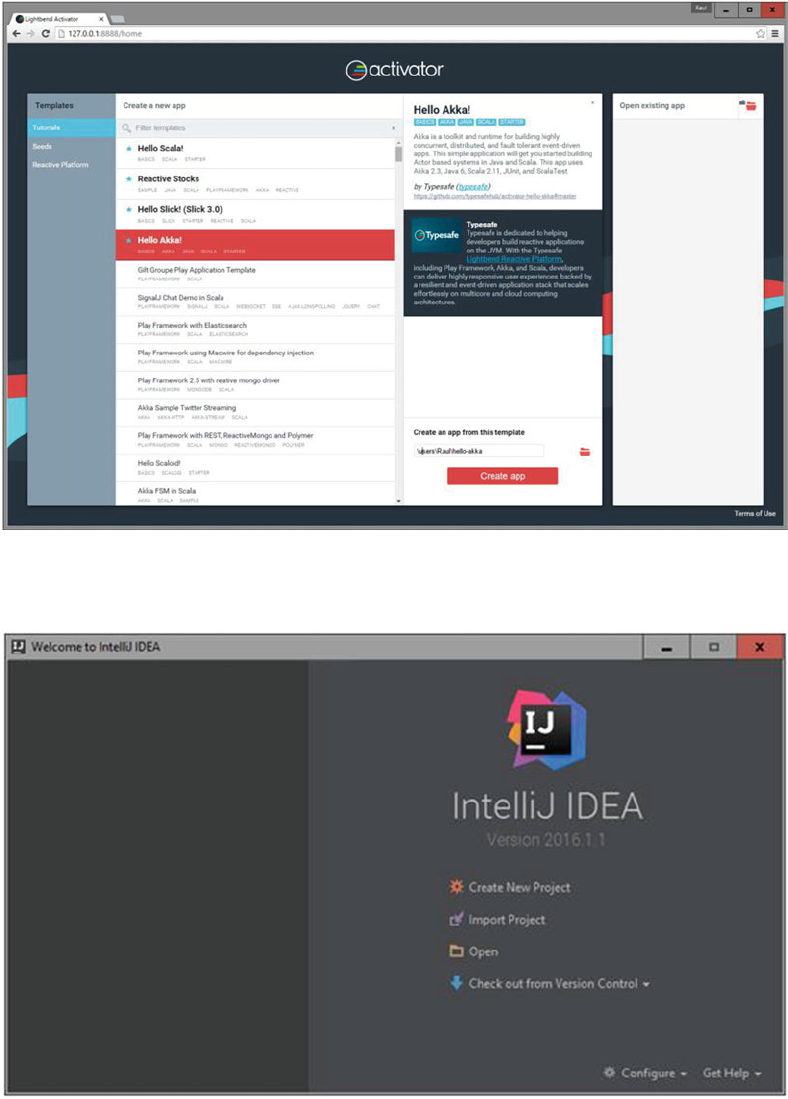

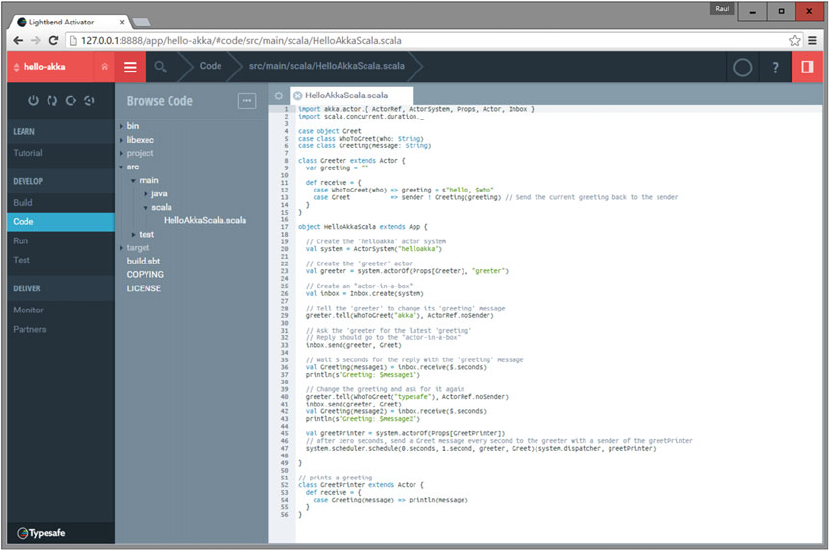

Installing Akka ................................................................................................................44

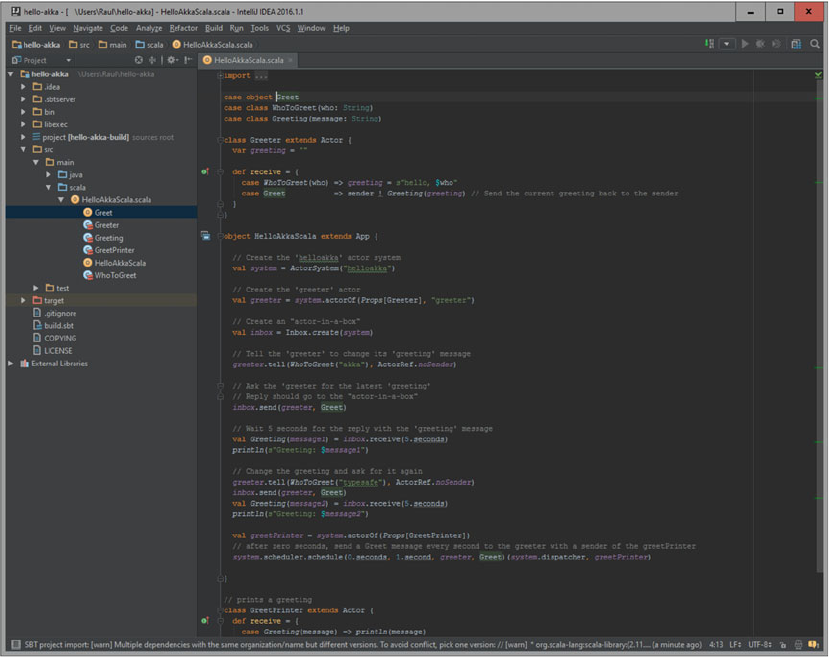

Akka Actors ....................................................................................................................51

Actors ...................................................................................................................................................51

Actor System ........................................................................................................................................53

Actor Reference ....................................................................................................................................53

Actor Communication ...........................................................................................................................54

Actor Lifecycle ......................................................................................................................................56

Starting Actors ......................................................................................................................................58

Stopping Actors ....................................................................................................................................60

Killing Actors .........................................................................................................................................61

Shutting down the Actor System ..........................................................................................................62

Actor Monitoring ...................................................................................................................................62

Looking up Actors .................................................................................................................................63

Actor Code of Conduct ..........................................................................................................................64

Summary ........................................................................................................................66

■Chapter 5: Storage: Apache Cassandra ............................................................... 67

Once Upon a Time... ........................................................................................................ 67

Modern Cassandra................................................................................................................................67

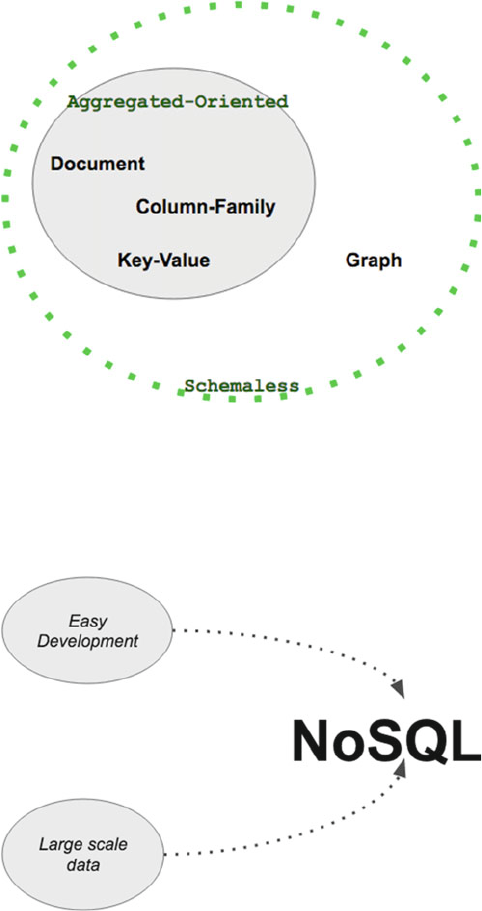

NoSQL Everywhere ......................................................................................................... 67

■ CONTENTS

x

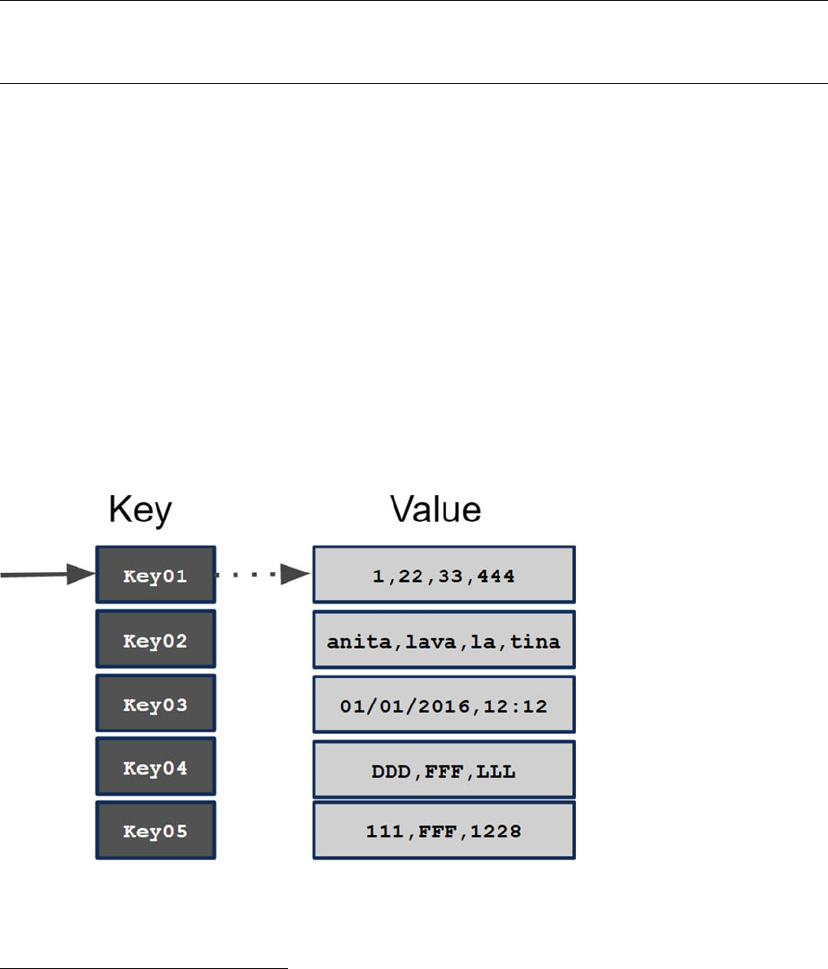

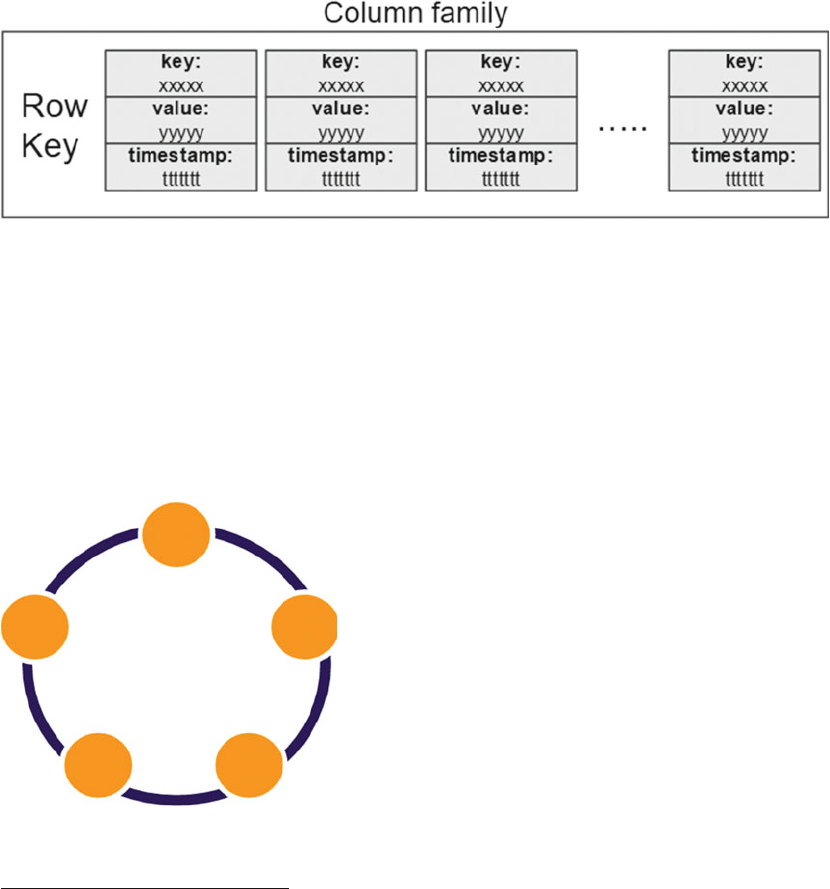

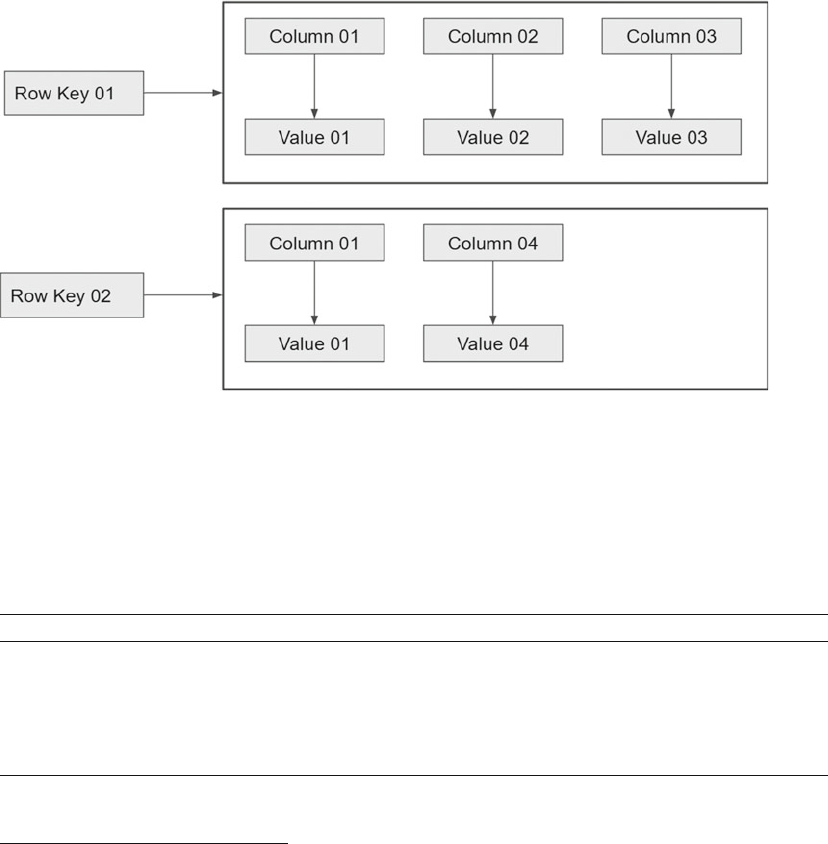

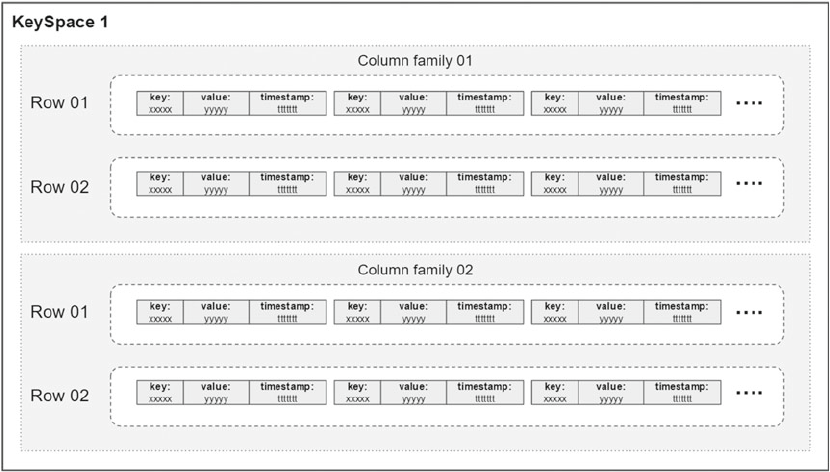

The Memory Value .......................................................................................................... 70

Key-Value and Column .........................................................................................................................70

Why Cassandra? ............................................................................................................. 71

The Data Model .....................................................................................................................................72

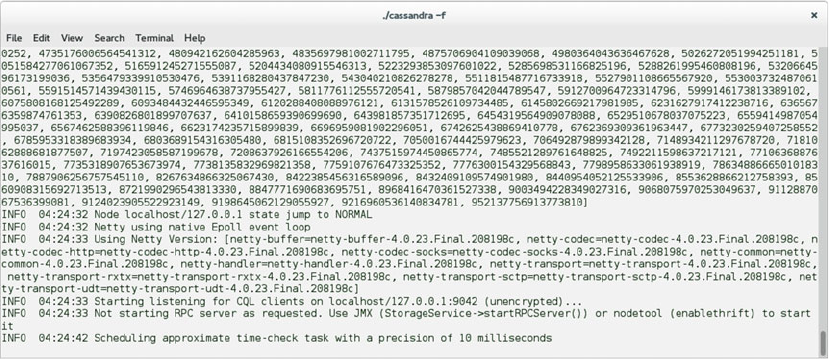

Cassandra 101 ............................................................................................................... 73

Installation ............................................................................................................................................73

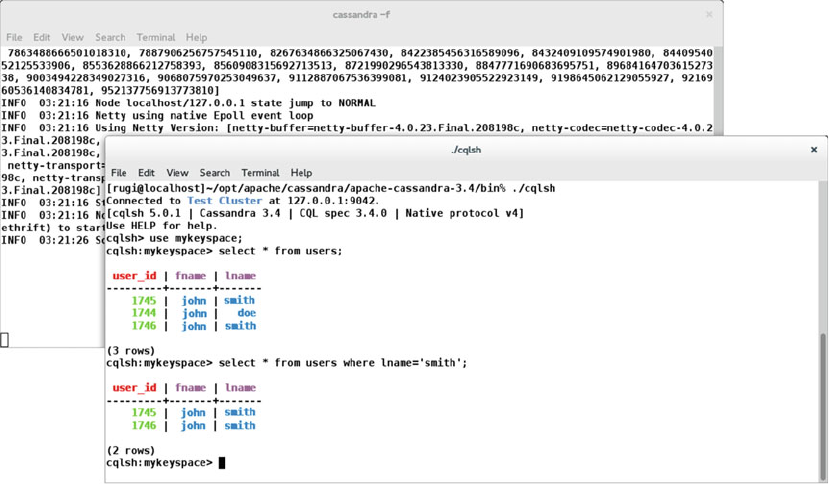

Beyond the Basics .......................................................................................................... 82

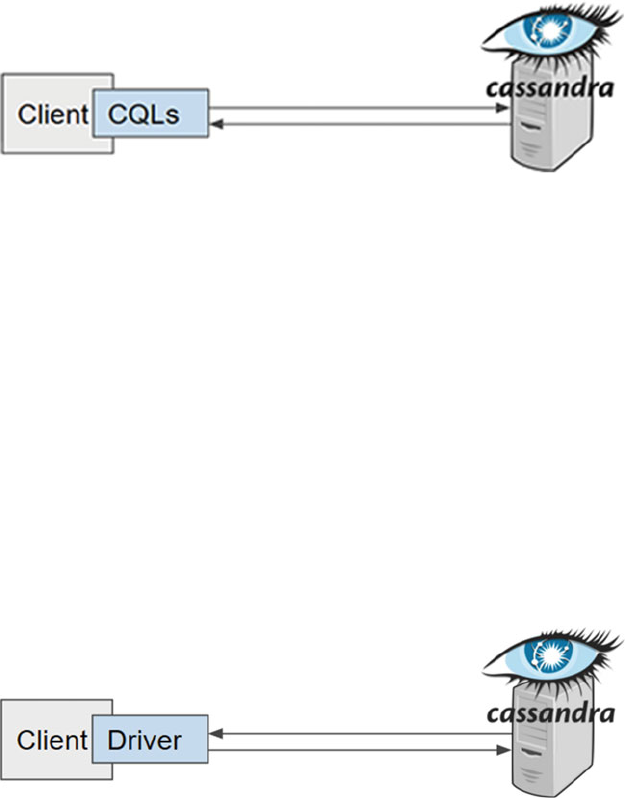

Client-Server ........................................................................................................................................82

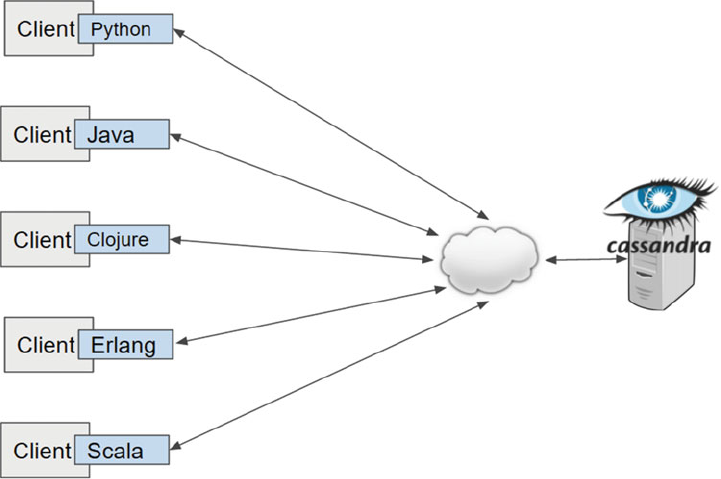

Other Clients .........................................................................................................................................83

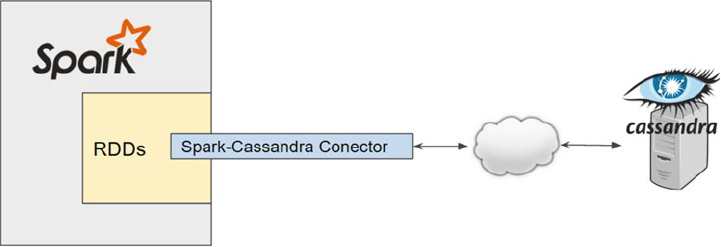

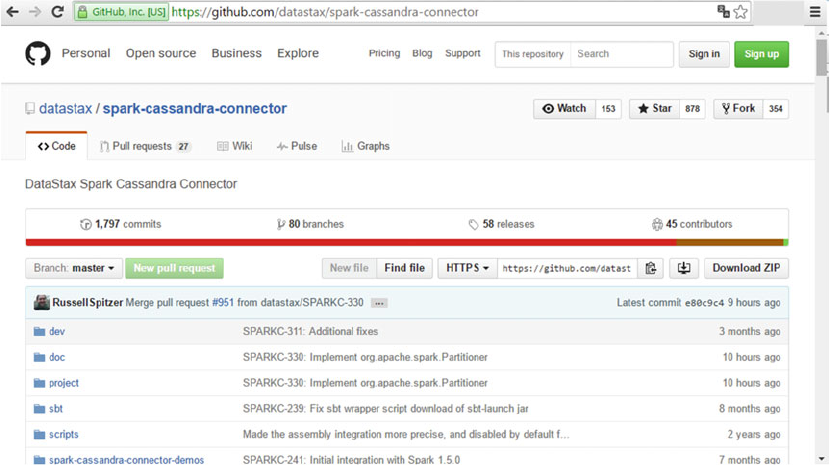

Apache Spark-Cassandra Connector ....................................................................................................87

Installing the Connector ........................................................................................................................87

Establishing the Connection .................................................................................................................89

More Than One Is Better ................................................................................................. 91

cassandra.yaml ....................................................................................................................................92

Setting the Cluster ................................................................................................................................93

Putting It All Together ..................................................................................................... 95

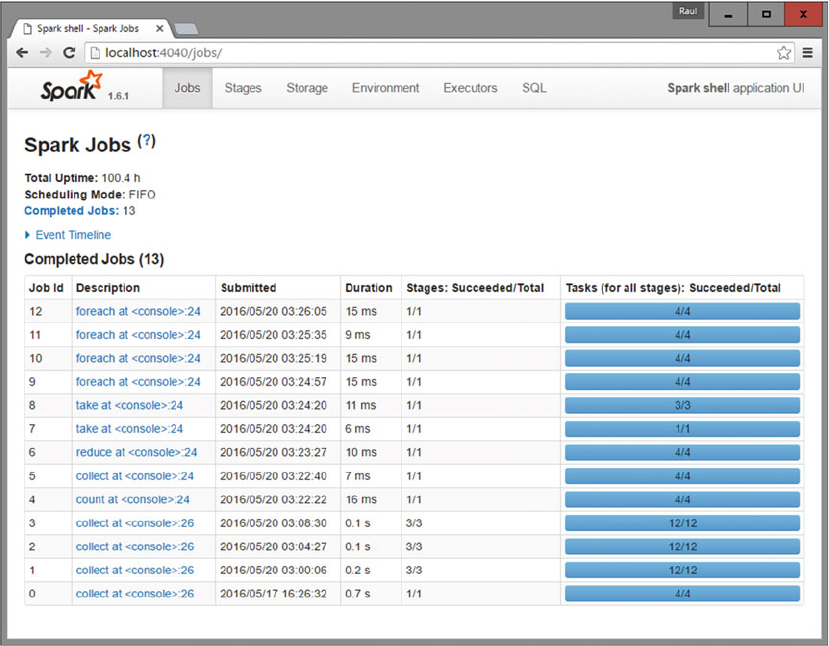

■Chapter 6: The Engine: Apache Spark ................................................................. 97

Introducing Spark ........................................................................................................... 97

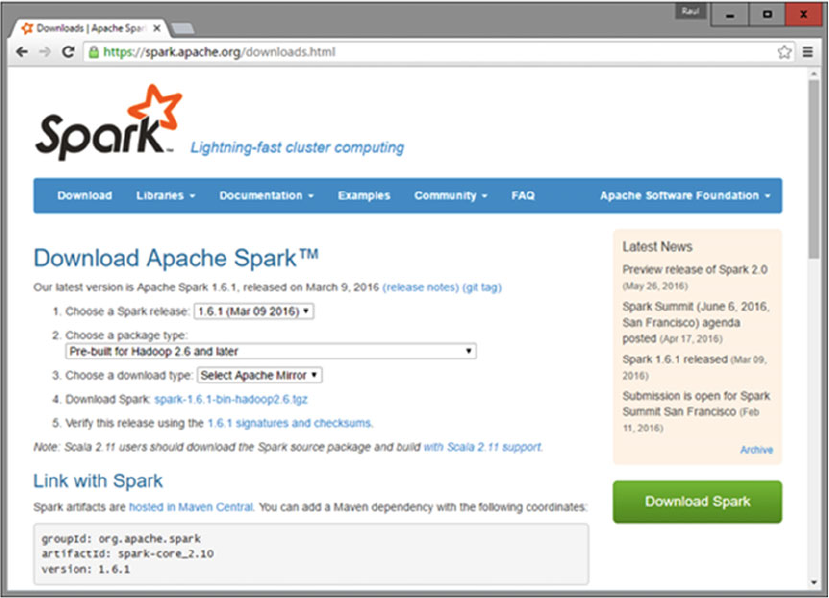

Apache Spark Download ......................................................................................................................98

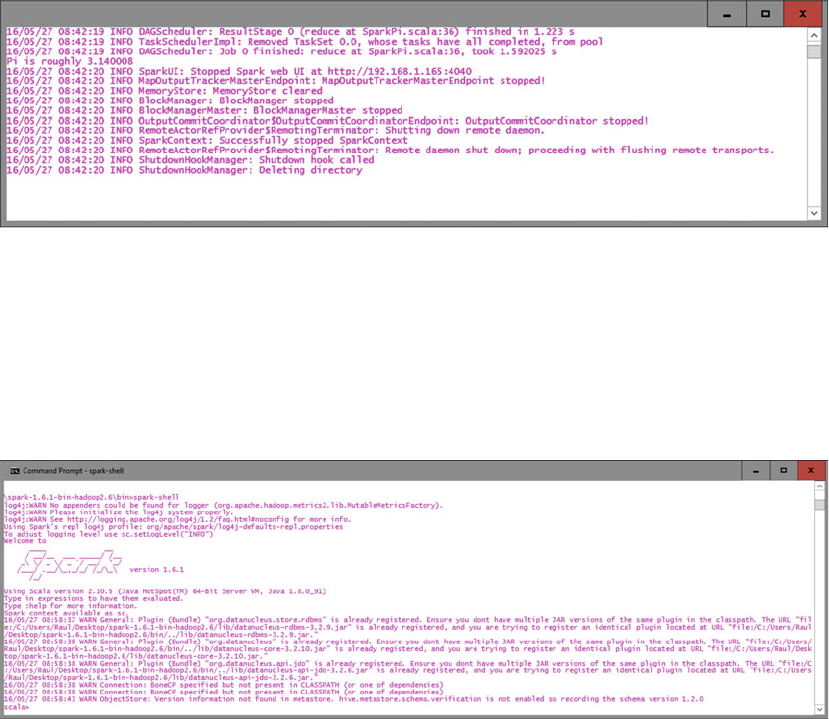

Let’s Kick the Tires ...............................................................................................................................99

Loading a Data File .............................................................................................................................100

Loading Data from S3 .........................................................................................................................100

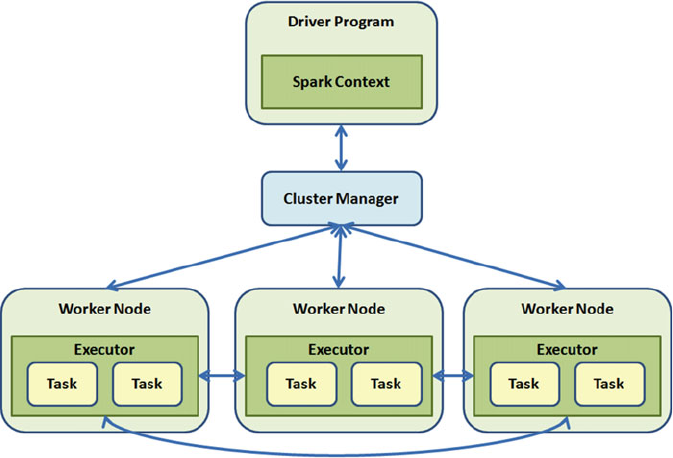

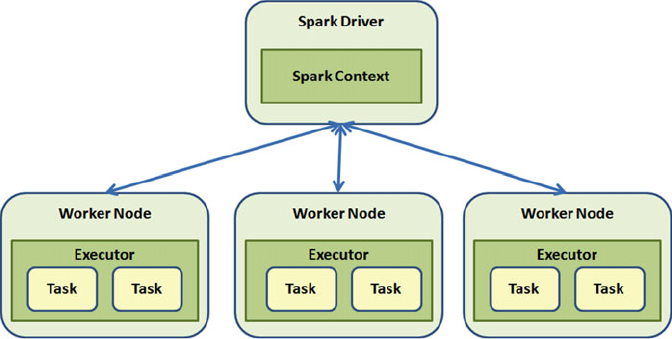

Spark Architecture........................................................................................................ 101

SparkContext ......................................................................................................................................102

Creating a SparkContext .....................................................................................................................102

SparkContext Metadata ......................................................................................................................103

SparkContext Methods .......................................................................................................................103

Working with RDDs....................................................................................................... 104

Standalone Apps .................................................................................................................................106

RDD Operations ..................................................................................................................................108

■ CONTENTS

xi

Spark in Cluster Mode .................................................................................................. 112

Runtime Architecture ..........................................................................................................................112

Driver ..................................................................................................................................................113

Executor ..............................................................................................................................................114

Cluster Manager .................................................................................................................................115

Program Execution .............................................................................................................................115

Application Deployment ......................................................................................................................115

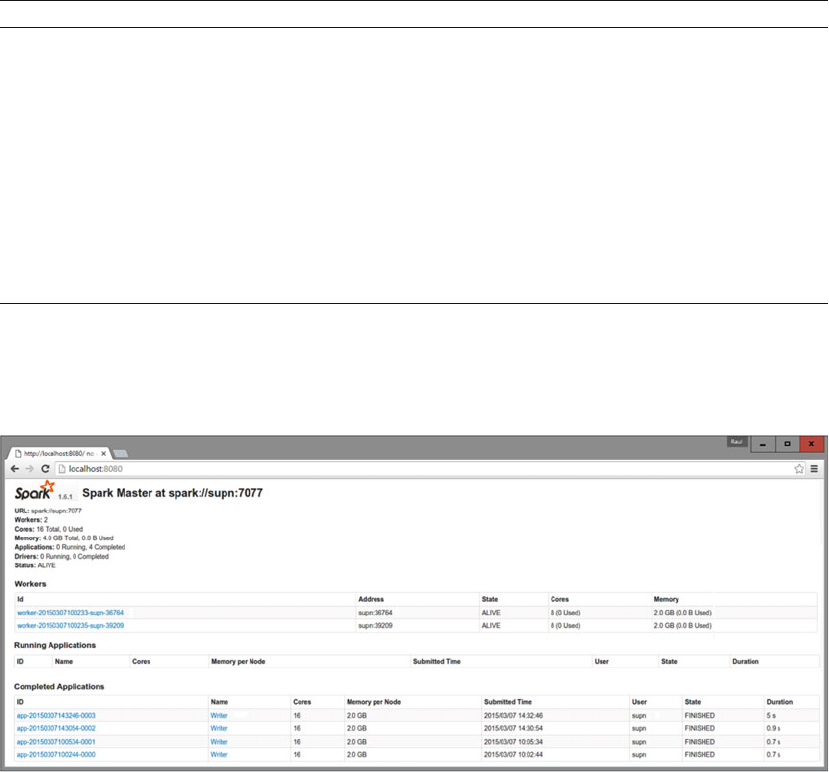

Running in Cluster Mode ....................................................................................................................117

Spark Standalone Mode .....................................................................................................................117

Running Spark on EC2 ........................................................................................................................120

Running Spark on Mesos ....................................................................................................................122

Submitting Our Application .................................................................................................................122

Confi guring Resources .......................................................................................................................123

High Availability ..................................................................................................................................123

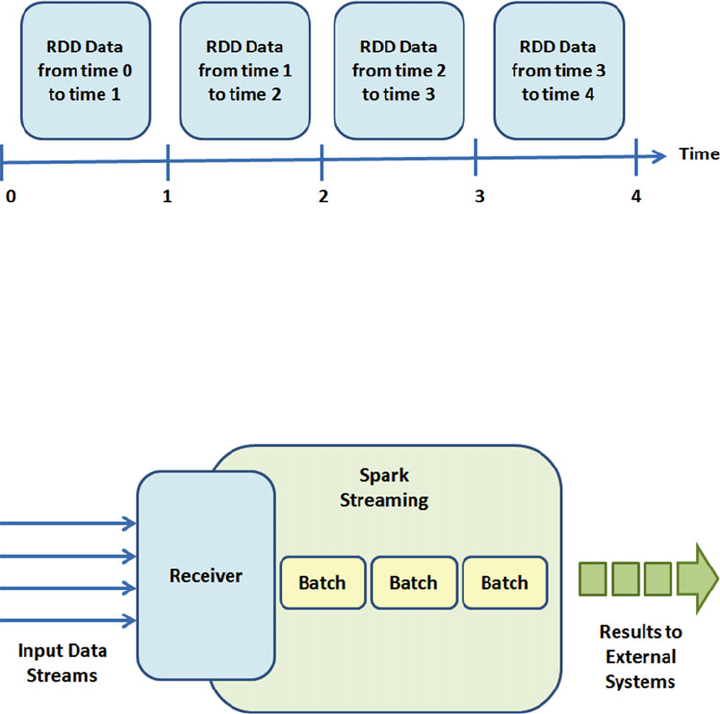

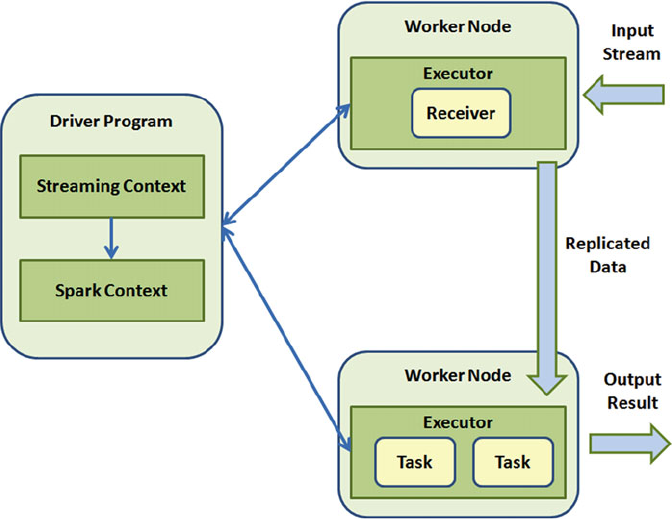

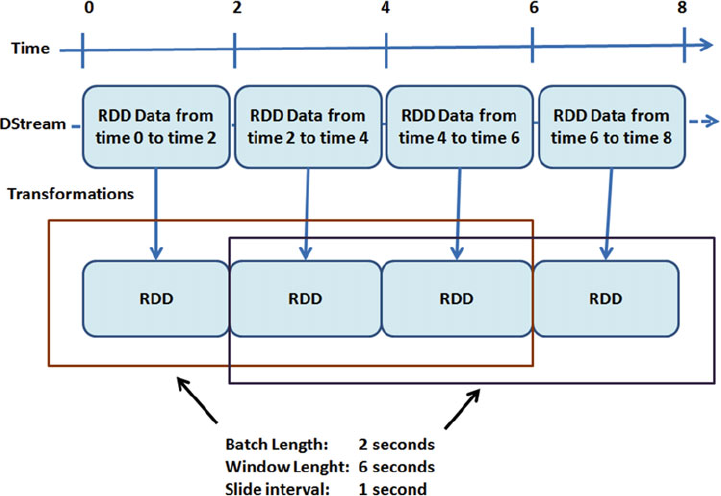

Spark Streaming .......................................................................................................... 123

Spark Streaming Architecture ............................................................................................................124

Transformations ..................................................................................................................................125

24/7 Spark Streaming ........................................................................................................................129

Checkpointing .....................................................................................................................................129

Spark Streaming Performance ...........................................................................................................129

Summary ...................................................................................................................... 130

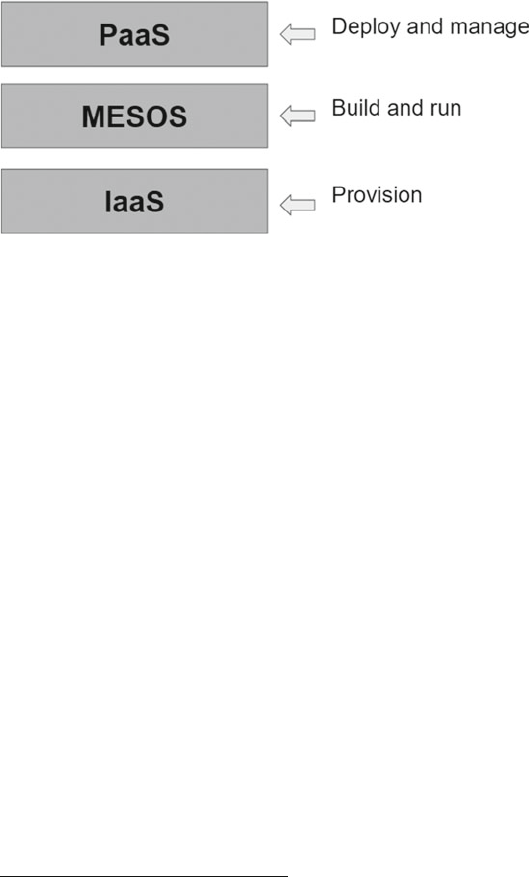

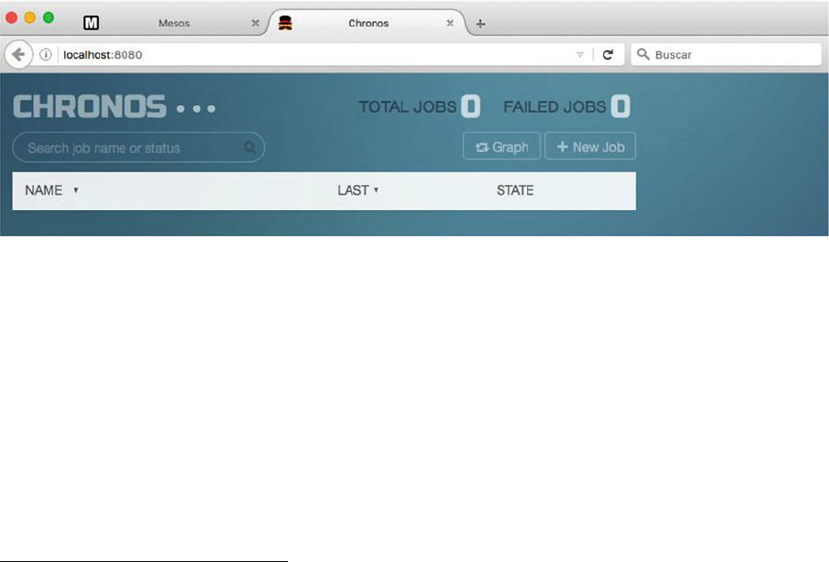

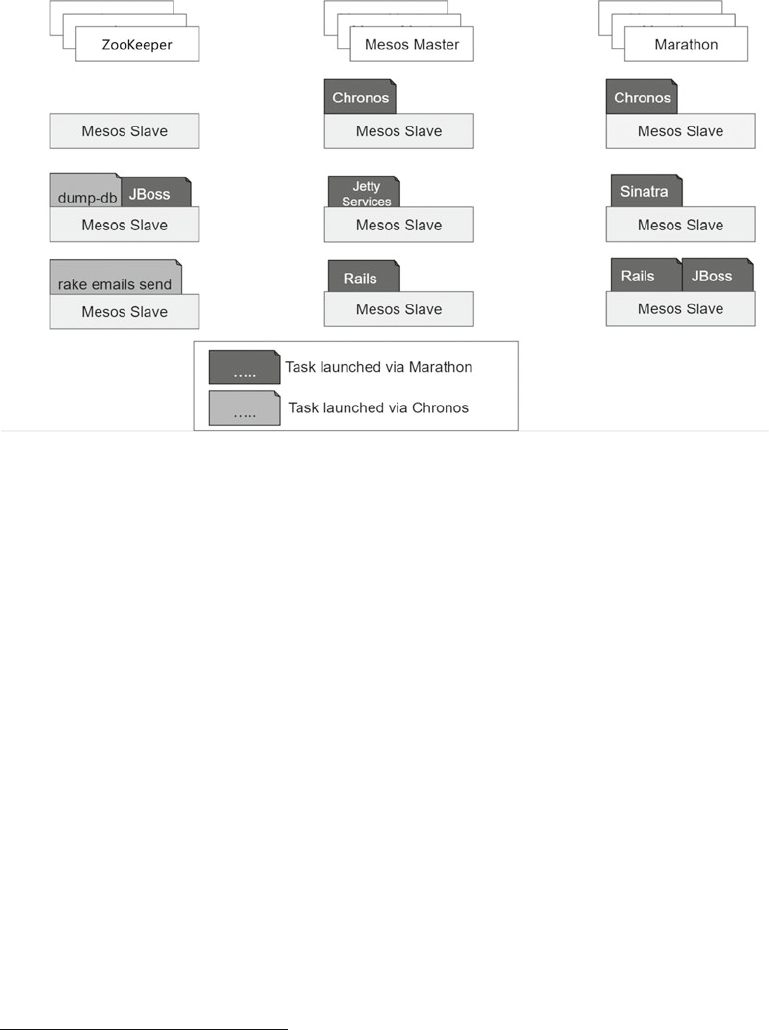

■Chapter 7: The Manager: Apache Mesos ........................................................... 131

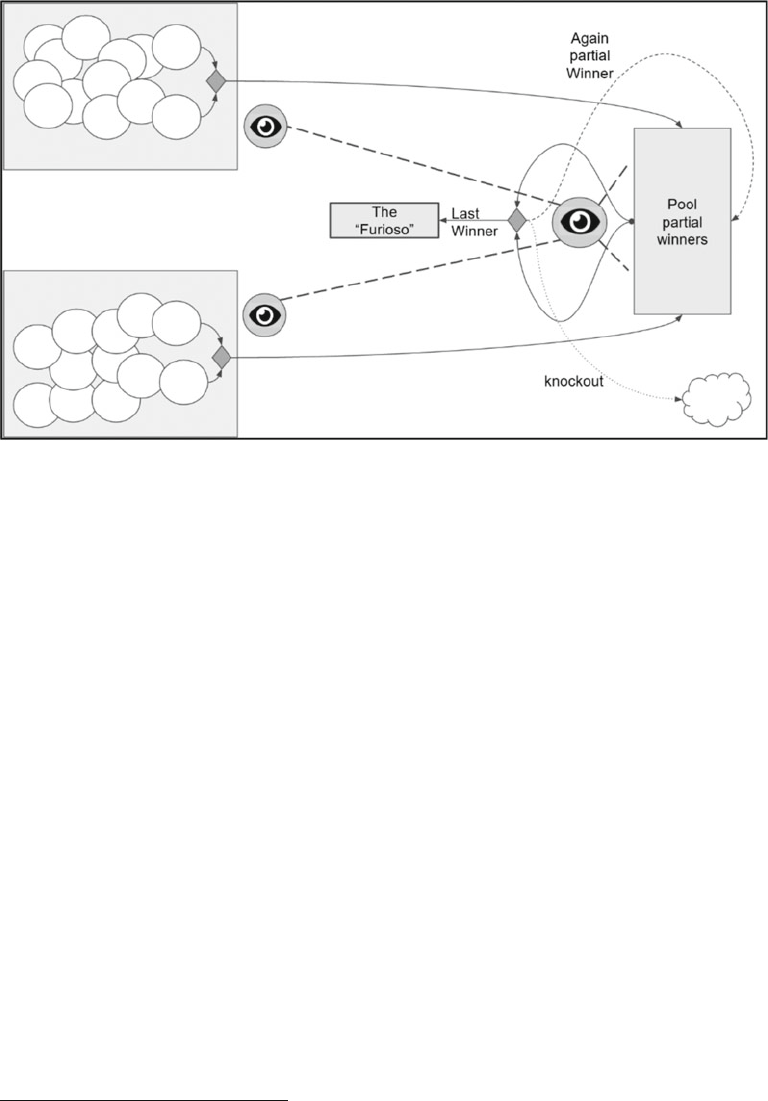

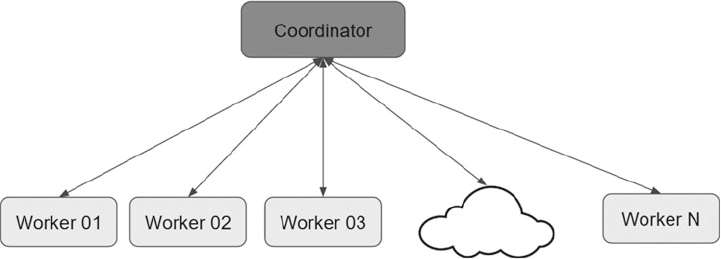

Divide et Impera (Divide and Rule) ............................................................................... 131

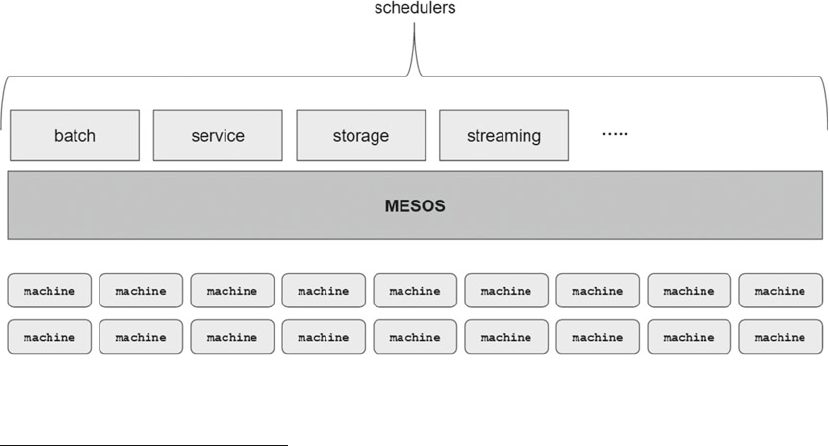

Distributed Systems ..................................................................................................... 134

Why Are They Important? ....................................................................................................................135

It Is Diffi cult to Have a Distributed System ................................................................... 135

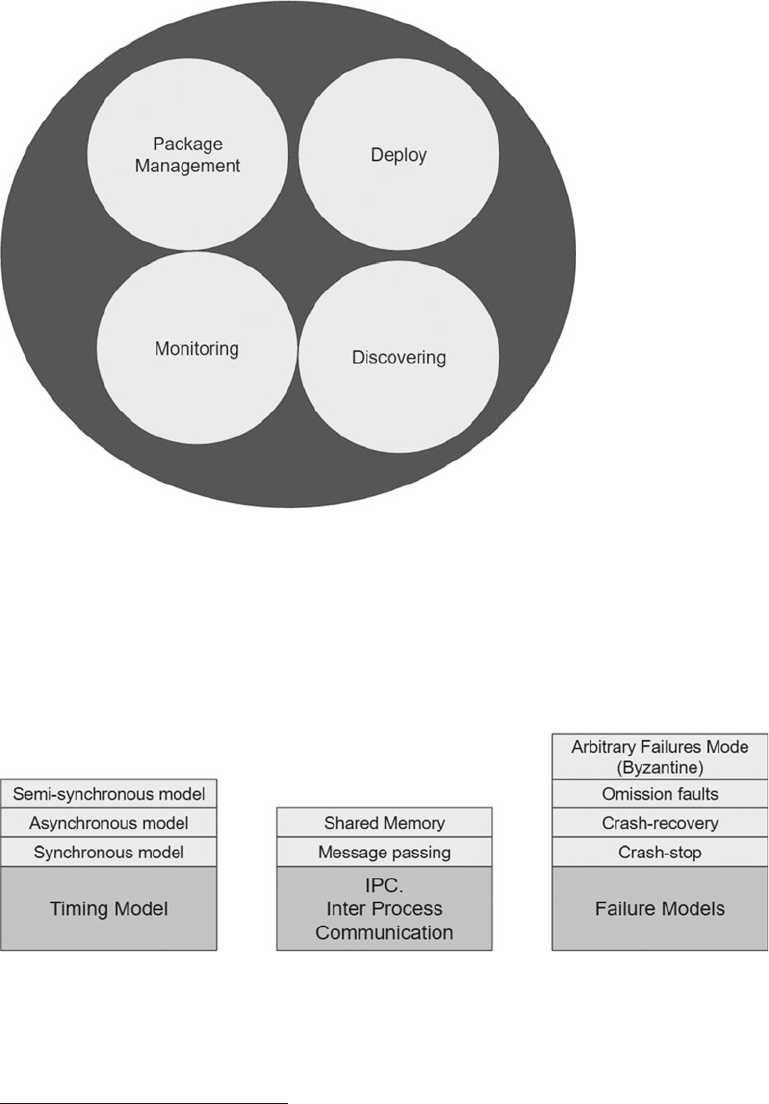

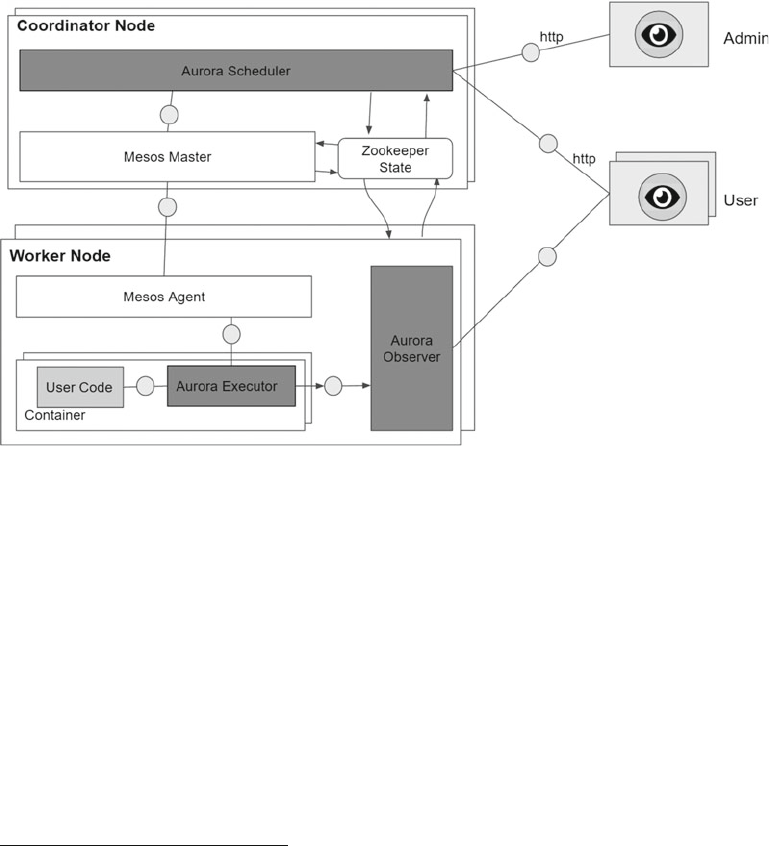

Ta-dah!! Apache Mesos ................................................................................................ 137

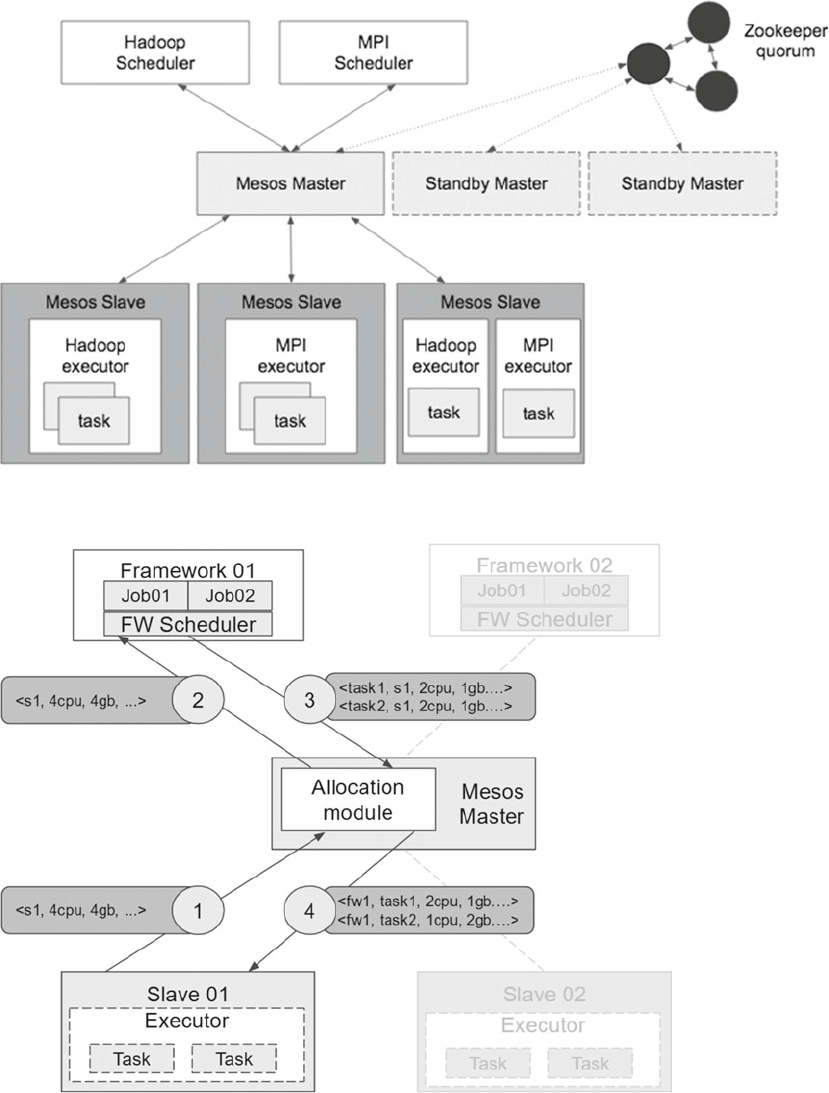

Mesos Framework ........................................................................................................ 138

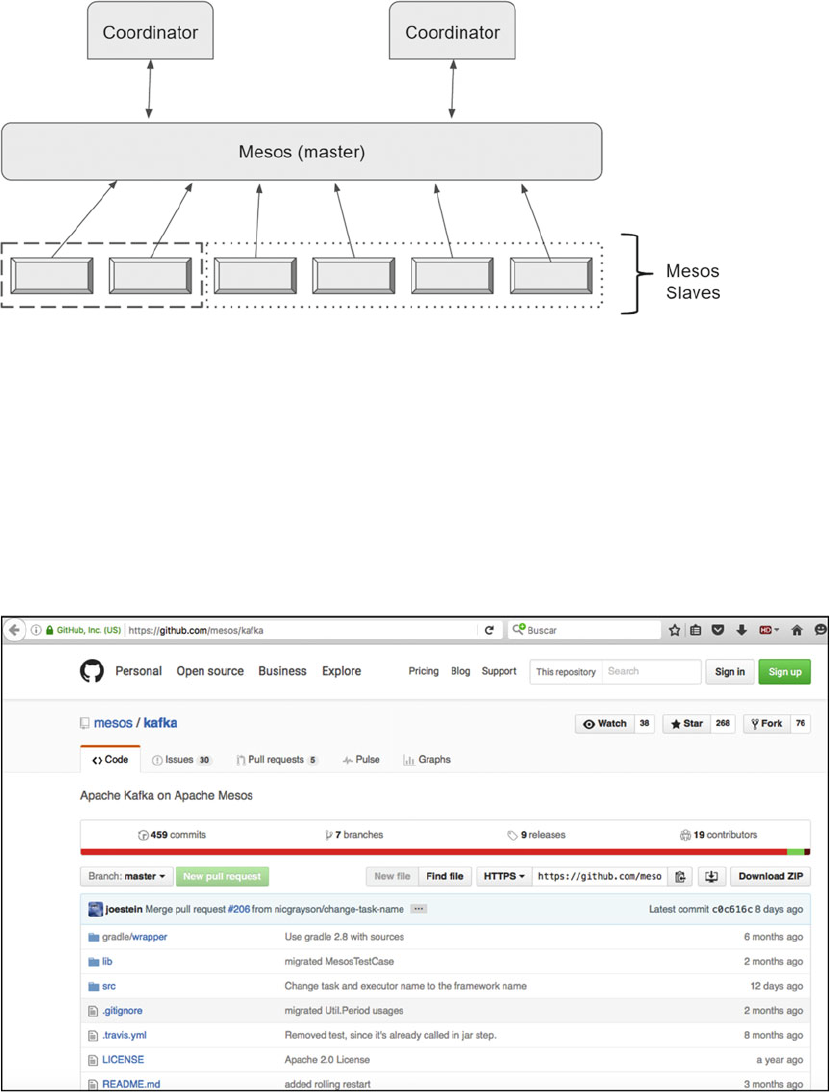

Architecture ........................................................................................................................................138

■ CONTENTS

xii

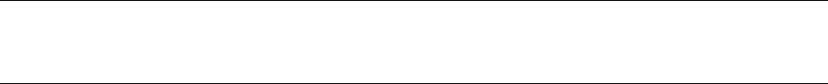

Mesos 101 .................................................................................................................... 140

Installation ..........................................................................................................................................140

Teaming ..............................................................................................................................................146

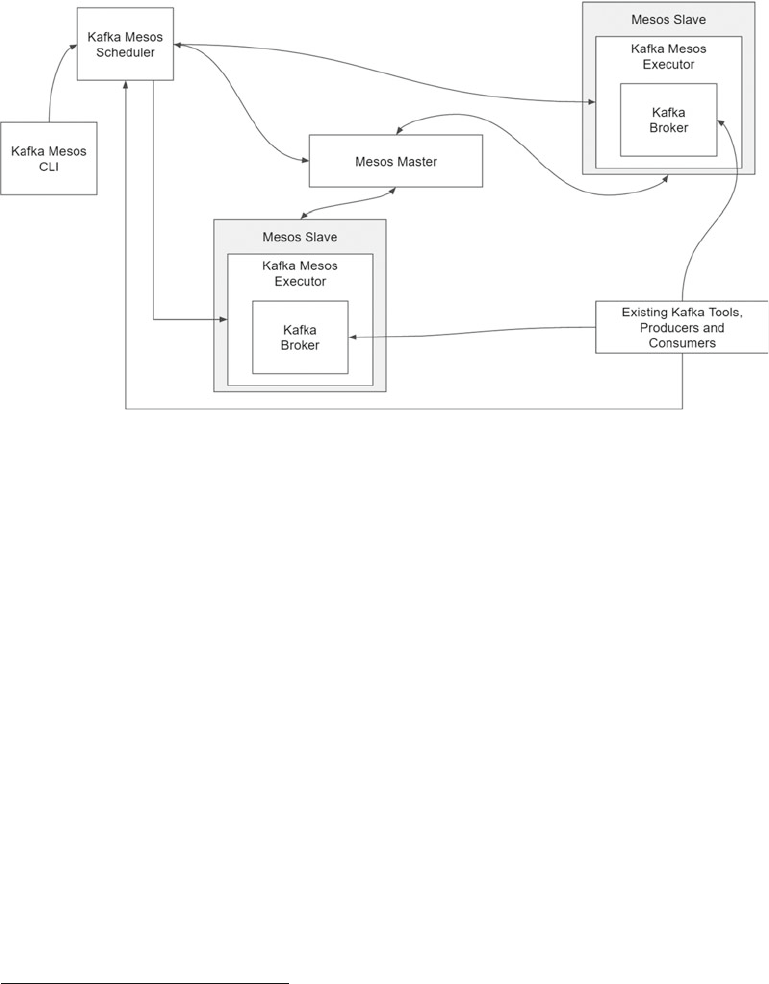

Let’s Talk About Clusters .............................................................................................. 156

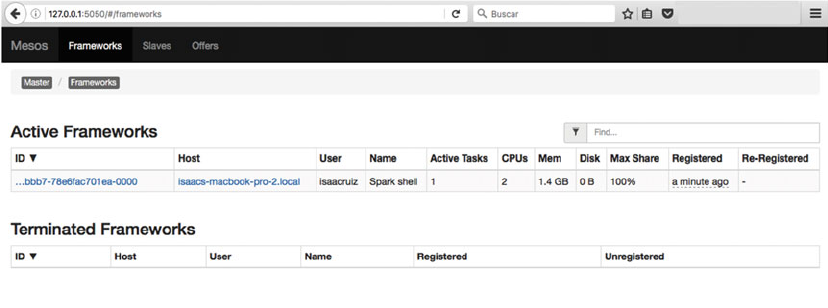

Apache Mesos and Apache Kafka.......................................................................................................157

Mesos and Apache Spark ...................................................................................................................161

The Best Is Yet to Come ......................................................................................................................163

Summary ............................................................................................................................................163

■Chapter 8: The Broker: Apache Kafka ................................................................ 165

Kafka Introduction ........................................................................................................ 165

Born in the Fast Data Era ....................................................................................................................167

Use Cases ...........................................................................................................................................168

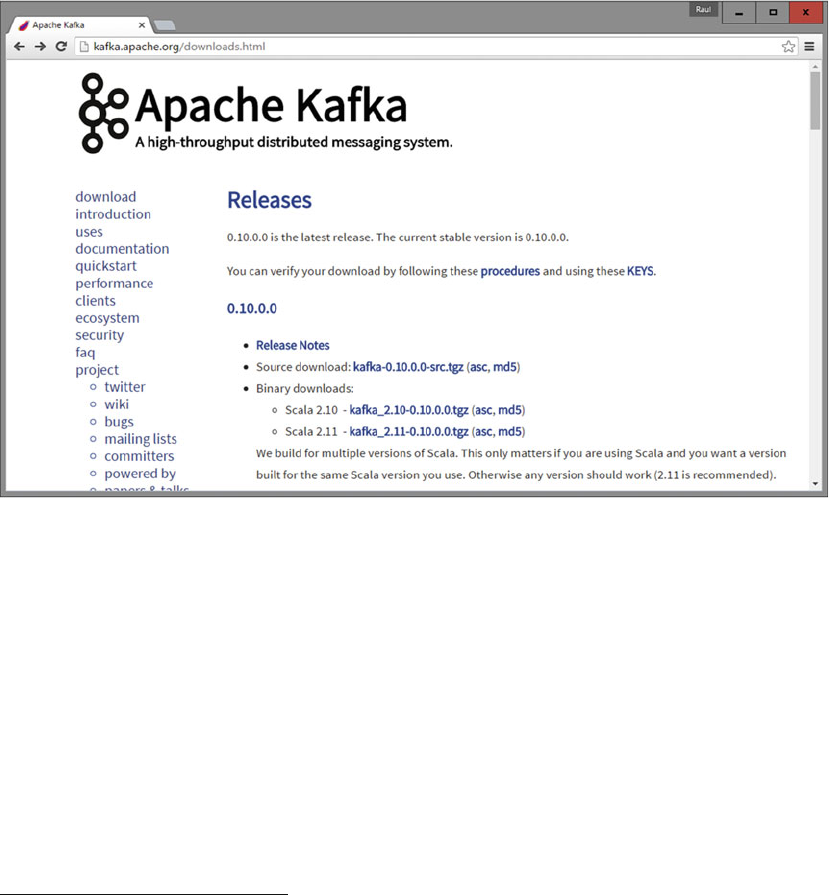

Kafka Installation .......................................................................................................... 169

Installing Java .....................................................................................................................................169

Installing Kafka ...................................................................................................................................170

Importing Kafka ..................................................................................................................................170

Kafka in Cluster ............................................................................................................ 171

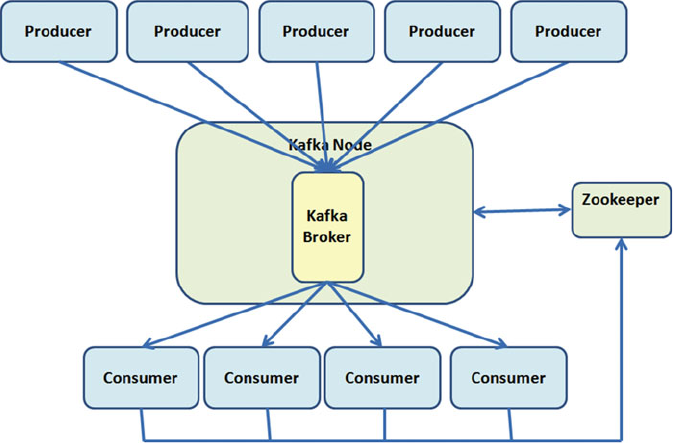

Single Node–Single Broker Cluster ....................................................................................................171

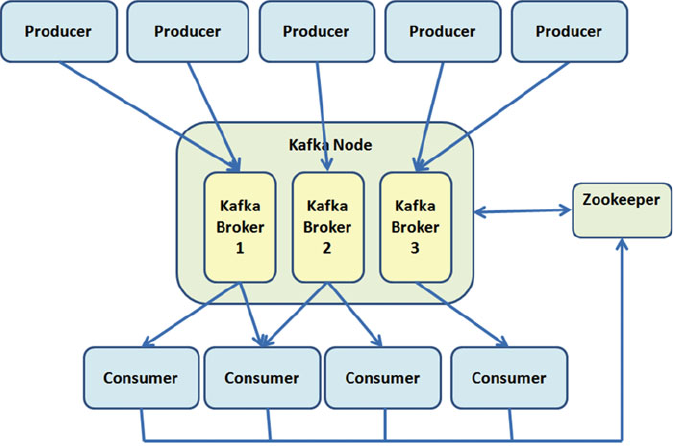

Single Node–Multiple Broker Cluster .................................................................................................175

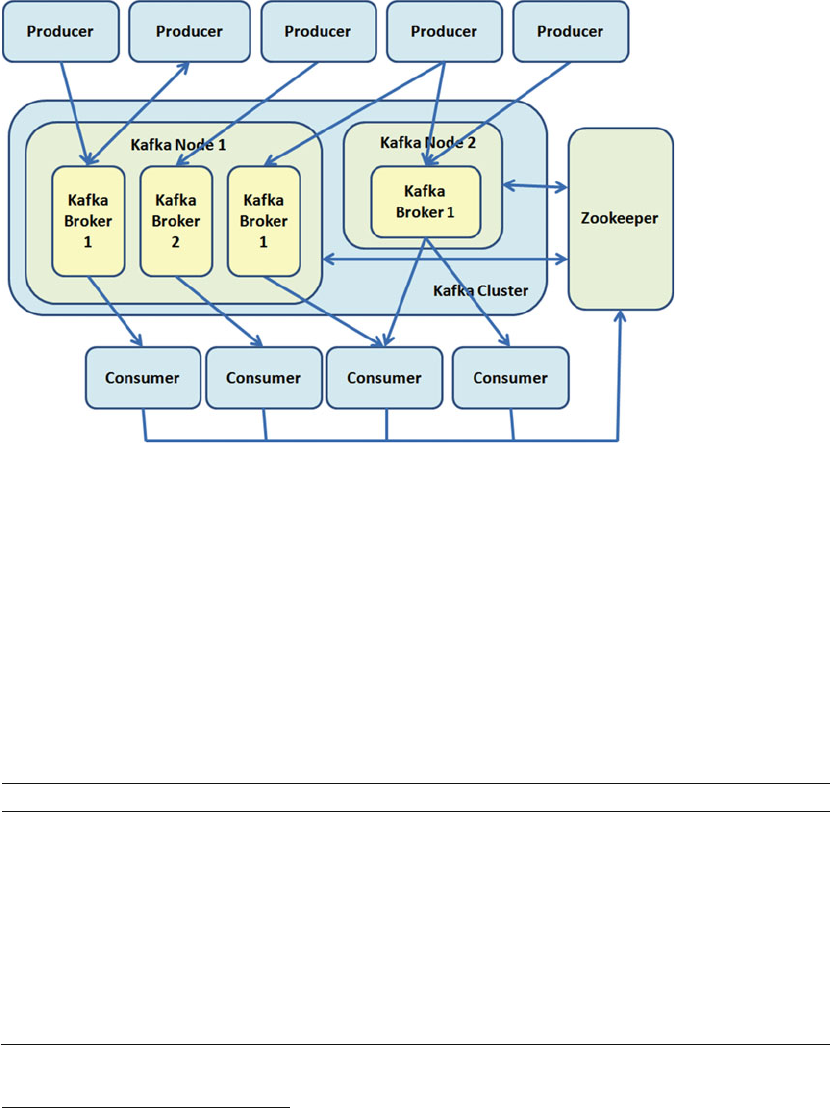

Multiple Node–Multiple Broker Cluster ...............................................................................................176

Broker Properties ................................................................................................................................177

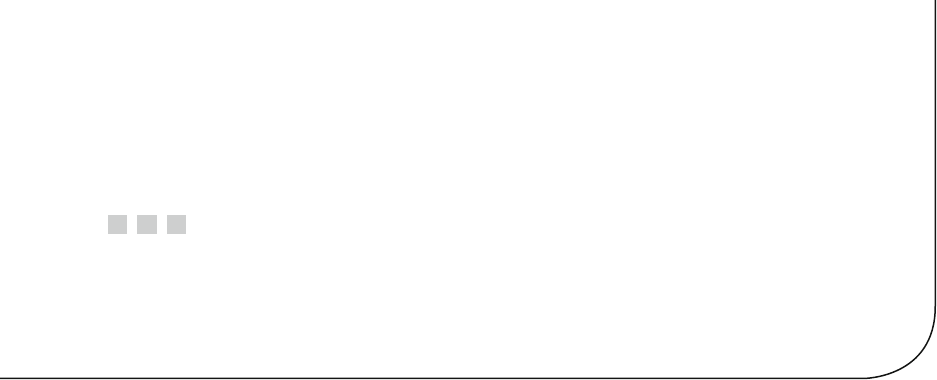

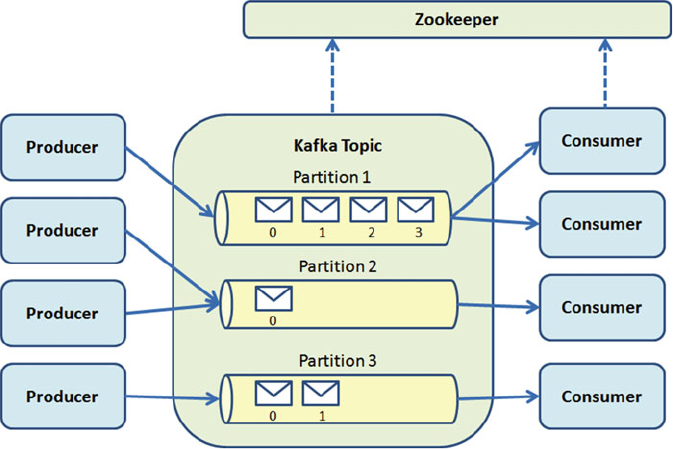

Kafka Architecture ........................................................................................................ 178

Log Compaction ..................................................................................................................................180

Kafka Design ......................................................................................................................................180

Message Compression .......................................................................................................................180

Replication ..........................................................................................................................................181

Kafka Producers ........................................................................................................... 182

Producer API .......................................................................................................................................182

Scala Producers ..................................................................................................................................182

Producers with Custom Partitioning ...................................................................................................186

Producer Properties ............................................................................................................................189

■ CONTENTS

xiii

Kafka Consumers ......................................................................................................... 190

Consumer API .....................................................................................................................................190

Simple Scala Consumers ....................................................................................................................191

Multithread Scala Consumers ............................................................................................................194

Consumer Properties ..........................................................................................................................197

Kafka Integration .......................................................................................................... 198

Integration with Apache Spark ...........................................................................................................198

Kafka Administration .................................................................................................... 199

Cluster Tools .......................................................................................................................................199

Adding Servers ...................................................................................................................................200

Summary ...................................................................................................................... 203

■Part III: Improving SMACK ................................................................................. 205

■Chapter 9: Fast Data Patterns ............................................................................ 207

Fast Data ...................................................................................................................... 207

Fast Data at a Glance..........................................................................................................................208

Beyond Big Data .................................................................................................................................209

Fast Data Characteristics ...................................................................................................................209

Fast Data and Hadoop ........................................................................................................................210

Data Enrichment .................................................................................................................................211

Queries ...............................................................................................................................................211

ACID vs. CAP ................................................................................................................. 212

ACID Properties ...................................................................................................................................212

CAP Theorem ......................................................................................................................................213

Consistency ........................................................................................................................................213

CRDT ...................................................................................................................................................214

Integrating Streaming and Transactions ...................................................................... 214

Pattern 1: Reject Requests Beyond a Threshold .................................................................................214

Pattern 2: Alerting on Predicted Trends Variation ...............................................................................215

When Not to Integrate Streaming and Transactions ...........................................................................215

Aggregation Techniques .....................................................................................................................215

■ CONTENTS

xiv

Streaming Transformations .......................................................................................... 216

Pattern 3: Use Streaming Transformations to Avoid ETL.....................................................................216

Pattern 4: Connect Big Data Analytics to Real-Time Stream Processing ............................................217

Pattern 5: Use Loose Coupling to Improve Reliability .........................................................................218

Points to Consider...............................................................................................................................218

Fault Recovery Strategies ............................................................................................ 219

Pattern 6: At-Most-Once Delivery .......................................................................................................219

Pattern 7: At-Least-Once Delivery ......................................................................................................220

Pattern 8: Exactly-Once Delivery ........................................................................................................220

Tag Data Identifi ers ...................................................................................................... 220

Pattern 9: Use Upserts over Inserts ....................................................................................................221

Pattern 10: Tag Data with Unique Identifi ers ......................................................................................221

Pattern 11: Use Kafka Offsets as Unique Identifi ers ...........................................................................222

When to Avoid Idempotency ...............................................................................................................223

Example: Switch Processing...............................................................................................................223

Summary ...................................................................................................................... 224

■Chapter 10: Data Pipelines ................................................................................ 225

Data Pipeline Strategies and Principles ....................................................................... 225

Asynchronous Message Passing ........................................................................................................226

Consensus and Gossip ........................................................................................................................226

Data Locality .......................................................................................................................................226

Failure Detection ................................................................................................................................226

Fault Tolerance/No Single Point of Failure ..........................................................................................226

Isolation ..............................................................................................................................................227

Location Transparency ........................................................................................................................227

Parallelism ..........................................................................................................................................227

Partition for Scale ...............................................................................................................................227

Replay for Any Point of Failure ...........................................................................................................227

Replicate for Resiliency ......................................................................................................................228

■ CONTENTS

xv

Scalable Infrastructure .......................................................................................................................228

Share Nothing/Masterless ..................................................................................................................228

Dynamo Systems Principles ...............................................................................................................228

Spark and Cassandra ................................................................................................... 229

Spark Streaming with Cassandra .......................................................................................................230

Saving Data ........................................................................................................................................232

Saving Datasets to Cassandra ............................................................................................................232

Saving a Collection of Tuples ..............................................................................................................233

Saving a Collection of Objects ............................................................................................................234

Modifying CQL Collections ..................................................................................................................234

Saving Objects of Cassandra User-Defi ned Types ..............................................................................235

Converting Scala Options to Cassandra Options ................................................................................236

Saving RDDs as New Tables ...............................................................................................................237

Akka and Kafka ............................................................................................................ 238

Akka and Cassandra ..................................................................................................... 241

Writing to Cassandra ..........................................................................................................................241

Reading from Cassandra ....................................................................................................................242

Connecting to Cassandra ....................................................................................................................244

Scanning Tweets .................................................................................................................................245

Testing TweetScannerActor ................................................................................................................246

Akka and Spark ............................................................................................................ 248

Kafka and Cassandra ................................................................................................... 249

CQL Types Supported ..........................................................................................................................250

Cassandra Sink ...................................................................................................................................250

Summary ...................................................................................................................... 250

■Chapter 11: Glossary ......................................................................................... 251

ACID .............................................................................................................................. 251

agent ............................................................................................................................ 251

API ................................................................................................................................ 251

BI .................................................................................................................................. 251

■ CONTENTS

xvi

big data ........................................................................................................................ 251

CAP ............................................................................................................................... 251

CEP ............................................................................................................................... 252

client-server ................................................................................................................. 252

cloud............................................................................................................................. 252

cluster .......................................................................................................................... 252

column family ............................................................................................................... 252

coordinator ................................................................................................................... 252

CQL ............................................................................................................................... 252

CQLS ............................................................................................................................. 252

concurrency.................................................................................................................. 253

commutative operations ............................................................................................... 253

CRDTs ........................................................................................................................... 253

dashboard .................................................................................................................... 253

data feed ...................................................................................................................... 253

DBMS............................................................................................................................ 253

determinism ................................................................................................................. 253

dimension data ............................................................................................................. 254

distributed computing. ................................................................................................. 254

driver ............................................................................................................................ 254

ETL ............................................................................................................................... 254

exabyte ......................................................................................................................... 254

exponential backoff ...................................................................................................... 254

failover ......................................................................................................................... 254

fast data ....................................................................................................................... 255

gossip ........................................................................................................................... 255

graph database ............................................................................................................ 255

HDSF............................................................................................................................. 255

■ CONTENTS

xvii

HTAP ............................................................................................................................. 255

IaaS .............................................................................................................................. 255

idempotence ................................................................................................................ 256

IMDG ............................................................................................................................. 256

IoT ................................................................................................................................ 256

key-value ...................................................................................................................... 256

keyspace ...................................................................................................................... 256

latency .......................................................................................................................... 256

master-slave................................................................................................................. 256

metadata ...................................................................................................................... 256

NoSQL ........................................................................................................................... 257

operational analytics .................................................................................................... 257

RDBMS ......................................................................................................................... 257

real-time analytics ....................................................................................................... 257

replication .................................................................................................................... 257

PaaS ............................................................................................................................. 257

probabilistic data structures ........................................................................................ 258

SaaS ............................................................................................................................. 258

scalability ..................................................................................................................... 258

shared nothing ............................................................................................................. 258

Spark-Cassandra Connector ........................................................................................ 258

streaming analytics ...................................................................................................... 258

synchronization ............................................................................................................ 258

unstructured data ......................................................................................................... 258

Index ..................................................................................................................... 259

xix

About the Authors

Raul Estrada has been a programmer since 1996 and a Java developer since the year 2000. He loves

functional languages like Elixir, Scala, Clojure, and Haskell. With more than 12 years of experience in high

availability and enterprise software, he has designed and implemented architectures since 2003. He has

been enterprise architect for BEA Systems and Oracle Inc., but he also enjoys mobile programming and

game development. Now he is focused on open source projects related to data pipelining like Apache Spark,

Apache Kafka, Apache Flink, and Apache Beam.

Isaac Ruiz has been a Java programmer since 2001, and a consultant and an architect since 2003. He has

participated in projects in different areas and varied scopes (education, communications, retail, and others).

He specializes in systems integration, particularly in the financial sector. Ruiz is a supporter of free software

and he likes to experiment with new technologies (frameworks, languages, and methods).

xxi

About the Technical Reviewer

Rogelio Vizcaino has been a programming professionally for ten years, and hacking a little longer than that.

Currently he is a JEE and solutions architect on a consultancy basis for one of the major banking institutions

in his country. Educated as an electronic systems engineer, performance and footprint are more than

“desirable treats” in software to him. Ironically, the once disliked tasks in database maintenance became his

mainstay skills through much effort in the design and development of both relational and non-relational

databases since the start of his professional practice—and the good luck of finding great masters to work

with during the journey. With most of his experience in the enterprise financial sector, Vizcaino’s heart is

with the Web. He keeps track of web-related technologies and standards, where he discovered the delights of

programming back in the late 1990s. Vizcaino considers himself a programmer before an architect, engineer,

or developer; “programming” is an all-encompassing term and should be used with pride. Above all, he likes

to learn, to create new things, and to fix broken ones.

xxiii

Acknowledgments

We want to say thanks to our acquisitions editor, Susan McDermott, who believed in this project from the

beginning; without her help, it would not have started.

We also thank Rita Fernando and Laura Berendson; without their effort and patience, it would not have

been possible to write this book.

We want to thank our technical reviewer, Rogelio Vizcaino; without him, the project would not have

been a success.

We also want to thank all the heroes who contribute open source projects, specifically with Spark,

Mesos, Akka, Cassandra and Kafka, and special recognition to those who develop the open source

connectors between these technologies.

We also thank all the people who have educated us and shown us the way throughout our lives.

xxv

Introduction

During 2014, 2015, and 2016, surveys show that among all software developers, those with higher wages are

the data engineers, the data scientists, and the data architects.

This is because there is a huge demand for technical professionals in data; unfortunately for large

organizations and fortunately for developers, there is a very low offering.

Traditionally, large volumes of information have been handled by specialized scientists and people

with a PhD from the most prestigious universities. And this is due to the popular belief that not all of us have

access to large volumes of corporate data or large enterprise production environments.

Apache Spark is disrupting the data industry for two reasons. The first is because it is an open source

project. In the last century, companies like IBM, Microsoft, SAP, and Oracle were the only ones capable of

handling large volumes of data, and today there is so much competition between them, that disseminating

designs or platform algorithms is strictly forbidden. Thus, the benefits of open source become stronger

because the contributions of so many people make free tools more powerful than the proprietary ones.

The second reason is that you do not need a production environment with large volumes of data or

large laboratories to develop in Apache Spark. Apache Spark can be installed on a laptop easily and the

development made there can be exported easily to enterprise environments with large volumes of data.

Apache Spark also makes the data development free and accessible to startups and little companies.

If you are reading this book, it is for two reasons: either you want to be among the best paid IT

professionals, or you already are and you want to learn how today’s trends will become requirements in the

not too distant future.

In this book, we explain how dominate the SMACK stack, which is also called the Spark++, because it

seems to be the open stack that will most likely succeed in the near future.

PART I

Introduction

3

© Raul Estrada and Isaac Ruiz 2016

R. Estrada and I. Ruiz, Big Data SMACK, DOI 10.1007/978-1-4842-2175-4_1

CHAPTER 1

Big Data, Big Challenges

In this chapter, we expose the modern architecture challenges facing the SMACK stack (Apache Spark,

Mesos, Akka, Cassandra, and Kafka). Also, we present dynamic processing environment problems to see

which conditions are suitable and which are not.

This chapter covers the following:

• Why we need a pipeline architecture for big data

• The Lambda Architecture concept

• ETL and its dark side

Big Data Problems

We live in the information era, where almost everything is data. In modern organizations, there is a suitable

difference between data engineers and data architects. Data engineers are experts who perfectly know the

inner workings and handling of the data engines. The data architect well understands all the data sources—

internal and external. Internal sources are usually owned systems. External sources are systems outside the

organization. The first big data problem is that the number of data sources increases with time.

A few years ago, a big company’s IT department could survive without data architects or data engineers.

Today’s challenge is to find good architects. The main purpose of architecture is always resilience. If the data

architect doesn’t have a data plan, then the data sources and the data size will become unmanageable.

The second problem is obtaining a data sample. When you are a data analyst (the person charged

with the compilation and analysis of numerical information), you need data samples—that is, data from

production environments. If the size of the data and/or the number of data sources increases, then obtaining

data samples becomes a herculean task.

The third big data problem is that the validity of an analysis becomes obsolete as time progresses.

Today, we have all the information. The true value of data is related to time. Within minutes, a

recommendation, an analysis, or a prediction can become useless.

The fourth problem is related to the return on investment of an analysis. The analysis velocity is directly

proportional to the return on investment. If the data analyst can’t get data in a timely way, then analysis costs

increase and the earnings decrease.

Electronic supplementary material The online version of this chapter (doi: 10.1007/978-1-4842-2175-4_1 )

contains supplementary material, which is available to authorized users.

CHAPTER 1 ■ BIG DATA, BIG CHALLENGES

4

Infrastructure Needs

Modern companies require a scalable infrastructure . The costs of your data center are always in accordance

with your business size. There is expensive hardware and costly software. And nowadays, when it comes to

open source software, people’s first thoughts are the high costs of consulting or the developer’s price tag. But

there is good news: today, big data solutions are not exclusive to large budgets.

Technologies must be distributed. Nowadays, when we talk about distributed software , we are no longer

talking about multiple processors; instead, we are talking about multiple data centers. This is the same

system, geographically dispersed.

If your business grows, your data should fit those needs. This is scalability. Most people are afraid of

the term big data , and spend valuable economic resources to tackle a problem that they don’t have. In

a traditional way, your business growth implies your data volumes’ growth. Here, the good news is scale

linearly with cheap hardware and inexpensive software.

Faster processing speed is not related to processor cycles per second, but the speed of all your

enterprise process. The now is everything, opportunities are unique, and few situations are repeatable.

When we talk about complex processing, we are not talking about the “Big O” of an algorithm. This is

related to the number of actors involved in one process.

The data flow is constant. The days when businesses could store everything in warehouses are gone.

The businesses that deliver responses the next day are dying. The now is everything. Data warehouses are

dying because stored data becomes rotten, and data caducity is shorter every day. The costs associated with

a warehouse are not affordable today.

And finally, there is visible and reproducible analysis. As we have mentioned, data analysts need fresh

and live data to satisfy their needs. If data becomes opaque, the business experiences a lack of management.

ETL

ETL stands for extract, transform, load . And it is, even today, a very painful process. The design and

maintenance of an ETL process is risky and difficult. Contrary to what many enterprises believe, they serve

the ETL and the ETL doesn’t serve anyone. It is not a requirement; it is a set of unnecessary steps.

Each step in ETL has its own risk and introduces errors. Sometimes, the time spent debugging the ETL

result is longer than the ETL process itself. ETL always introduces errors. Everyone dedicated to ETL knows

that having no errors is an error. In addition, everyone dedicated to ETL knows that applying ETL onto

sensitive data is playing with the company’s stability.

Everybody knows that when there is a failure in an ETL process, data duplication odds are high.

Expensive debugging processes (human and technological) should be applied after an ETL failure. This

means looking for duplicates and restoring information.

The tools usually cost millions of dollars. Big companies know that ETL is good business for them, but not

for the client. The human race has invested a lot of resources (temporal and economic) in making ETL tools.

The ETL decreases throughput. The performance of the entire company decreases when the ETL

process is running, because the ETL process demands resources: network, database, disk space, processors,

humans, and so forth.

The ETL increases complexity. Few computational processes are as common and as complicated. When

a process requires ETL, the consultants know that the process will be complex, because ETL rarely adds

value to a business’s “line of sight” and requires multiple actors, steps, and conditions.

ETL requires intermediary files writing. Yes, as if computational resources were infinite, costless, and easily

replaceable. In today’s economy, the concept of big intermediary files is an aberration that should be removed.

The ETL involves parsing and reparsing text files. Yes, the lack of appropriate data structures leads to

unnecessary parsing processes. And when they finish, the result must be reparsed to ensure the consistency

and integrity of the generated files.

Finally, the ETL pattern should be duplicated over all our data centers. The number doesn’t matter;

the ETL should be replicated in every data center.

CHAPTER 1 ■ BIG DATA, BIG CHALLENGES

5

The good news is that no ETL pipelines are typically built on the SMACK stack. ETL is the opposite of

high availability, resiliency, and distribution. As rule of thumb, if you write a lot of intermediary files, you

suffer ETL; as if your resources—computational and economic—were infinite.

The first step is to remove the extract phase. Today we have very powerful tools (for example, Scala) that

can work with binary data preserved under strongly typed schemas (instead of using big text dumps parsed

among several heterogeneous systems). Thus, it is an elegant weapon for a more civilized big data age.

The second step is to remove the load phase. Today, your data collection can be done with a modern

distributed messaging system (for example, Kafka) and you can make the distribution to all your clients in

real time. There is no need to batch “load.”

Lambda Architecture

Lambda Architecture is a data processing architecture designed to handle massive quantities of data by

taking advantage of both batch and stream processing methods. As you saw in previous sections, today’s

challenge is to have the batch and streaming at the same time.

One of the best options is Spark. This wonderful framework allows batch and stream data processing in the

same application at the same time. Unlike many Lambda solutions, SMACK satisfies these two requirements: it

can handle a data stream in real time and handle despair data models from multiple data sources.

In SMACK, we persist in Cassandra, the analytics data produced by Spark, so we guarantee the access

to historical data as requested. In case of failure, Cassandra has the resiliency to replay our data before the

error. Spark is not the only tool that allows both behaviors at the same time, but we believe that Apache

Spark is the best.

Hadoop

Apache Hadoop is an open-source software framework written in Java for distributed storage and the

distributed processing of very large data sets on computer clusters built from commodity hardware.

There are two main components associated with Hadoop: Hadoop MapReduce and Hadoop

Distributed File System ( HDFS ). These components were inspired by the Google file system.

We could talk more about Hadoop, but there are lots of books specifically written on this topic. Hadoop was

designed in a context where size, scope, and data completeness are more important than speed of response.

And here you face with a crucial decision: if the issue that you need to solve is more like data

warehousing and batch processing, Apache Hadoop could be your solution. On the other hand, if the issue

is the speed of response and the amount of information is measured in speed units instead of data size units,

Apache Spark is your solution.

Data Center Operation

And we take this space to briefly reflect on how the data center operation has changed.

Yesterday, everything scaled up; today, everything scales out. A few years ago, the term data center

meant proprietary use of specialized and expensive supercomputers. Today’s challenge is to be competitive

using commodity computers connected with a non-expensive network.

The total cost of ownership determines all. Business determines the cost and size of the data center.

Modern startups always rise from a small data center. Buying or renting an expensive data center just to see

if your startup is a good idea has no meaning in the modern economy.

The M in SMACK is a good solution to all your data center needs. With Apache Mesos, you can

“abstract” all the resources from all the interconnected small computers to build a supercomputer with the

linear sum of each machine’s resources: CPU cores, memory, disk, and network.

CHAPTER 1 ■ BIG DATA, BIG CHALLENGES

6

The Open Source Reign

A few years ago, dependency on a vendor was a double-edged sword. On one hand, large companies hired

proprietary software firms to later blame the manufacturer for any failure in their systems. But, on the other

hand, this dependence—all the processes, development, and maintenance—became slow and all the issues

were discussed with a contract in hand.

Many large companies don’t implement open source solutions for fear that no one else can provide the

same support as large manufacturers. But weighing both proposals, the vendor lock-in and the external bug

fixing is typically more expensive than open source solutions.

In the past, the big three-letter monopolies dictated the game rules. Today, the rules are made “by and

for” the developers, the transparency is guaranteed by APIs defined by the same community. Some groups—

like the Apache Software Foundation and the Eclipse Foundation—provide guides, infrastructure, and tools

for sustainable and fair development of these technologies.

Obviously, nothing is free in this life; companies must invest in training their staff on open source

technologies.

The Data Store Diversification

Few people see this, but this is the beginning of the decline of the relational databases era. Since 2010,

and the emergence of NoSQL and NoETL, there has been tough criticism of traditional systems, which is

redefining the leader board.

Due to modern business needs, having everything stored in a relational database will go from being the

standard way to the old-fashioned and obsolete way. Simple daily problems like recording the data, multiple

store synchronization, and expensive store size are promoting NoSQL and NoETL solutions.

When moving data, gravity and location matter. Data gravity is related to the costs associated with

moving a huge amount of data from one point to another. Sometimes, the simple everyday task of restoring a

backup can be a daunting task in terms of time and money.

Data allocation is a modern concept related to moving the computation resources where the data is

located, rather than moving the data to where the computation is. It sounds simple, but due to the hardware

(re)evolution, the ability to perform complex calculations on new and powerful client machines doesn’t

impact customer perception on the performance of the entire system.

DevOps (development operations) is a term coined by Andrew Clay Shafer and Patrick Debois at the

Agile Conference in 2008.

1 Since then, DevOps has become a movement, a culture, and a lifestyle where

software developers and information technology professionals charged with data center operation can live

and work in harmony. How is this achieved? Easy: by dissolving the differences between them.

Today DevOps is one of the most profitable IT specializations. Modern tools like Docker and Spark

simplify the movement between testing and production environments. The developers can have production

data easily and the testing environments are almost mirrored with production environments.

As you will see in Chapter 7 , today’s tendency is containerize the development pipeline from

development to production.

1 http://ieeexplore.ieee.org/xpl/mostRecentIssue.jsp?punumber=4599439

CHAPTER 1 ■ BIG DATA, BIG CHALLENGES

7

Is SMACK the Solution?

Even today, there are very few companies fully using SMACK. That is, many major companies use a flavor

of SMACK—just use one, two, or three letters of the SMACK stack . As previously mentioned, Spark has

many advantages over Hadoop. Spark also solves problems that Hadoop cannot. However, there are some

environments where Hadoop has deep roots and where workflow is completely batch based. In these

instances, Hadoop is usually a better choice.

Several SMACK letters have become a requirement for some companies that are in pilot stages and aim

to capitalize all the investment in big data tools and training. The purpose of this book is to give you options.

The goal is not to make a full pipeline architecture installation of all the five technologies.

However, there are many alternatives to the SMACK stack technologies. For example, Yarn may be an

alternative to Mesos. For batch processing, Apache Flink can be an alternative to Spark. The SMACK stack

axiom is to build an end-to-end pipeline and have the right component in the correct position, so that

integration can be done quickly and naturally, instead of having expensive tools that require a lot of effort to

cohabit among them.

9

© Raul Estrada and Isaac Ruiz 2016

R. Estrada and I. Ruiz, Big Data SMACK, DOI 10.1007/978-1-4842-2175-4_2

CHAPTER 2

Big Data, Big Solutions

In Chapter 1 , we answered the Why? . In this chapter, we will answer the How? . When you understand the

Why, the answer to the How happens in only a matter of time.

This chapter covers the following topics:

• Traditional vs. modern (big) data

• SMACK in a nutshell

• S park, the engine

• M esos, the container

• A kka, the model

• C assandra, the storage

• K afka, the broker

Traditional vs. Modern (Big) Data

Is time quantized? Is there an indivisible amount of time that cannot be divided? Until now, the correct

answer to these questions was “Nobody knows.” The only certain thing is that on a human scale, life doesn’t

happen in batch mode.

Many systems are monitoring a continuous stream of events: weather events, GPS signals, vital signs,

logs, device metrics…. The list is endless. The natural way to collect and analyze this information is as a

stream of data.

Handling data as streams is the correct way to model this behavior, but until recently, this methodology

was very difficult to do well. The previous rates of messages were in the range of thousands of messages per

second—the new technologies discussed in this book can deliver rates of millions of messages per second.

The point is this: streaming data is not a matter for very specialized computer science projects; stream-

based data is becoming the rule for data-driven companies.

Table 2-1 compares the three approaches: traditional data, traditional big data, and modern big data.

CHAPTER 2 ■ BIG DATA, BIG SOLUTIONS

10

Table 2-1. Traditional Data, Traditional Big Data, and Modern Big Data Approaches

CONCEPT TRADITIONAL DATA TRADITIONAL BIG DATA MODERN BIG DATA

Person • IT oriented • IT oriented • Business oriented

Roles • Developer • Data engineer • Business user

• Data architect • Data scientist

Data Sources • Relational • Relational • Relational

• Files • Files • Files

• Message queues • Message queues • Message queues

• Data service • Data service

• NoSQL

Data Processing • Application server • Application server • Application server

• ETL • ETL • ETL

• Hadoop • Hadoop

• Spark

Metadata • Limited by IT • Limited by model • Automatically

generated

• Context enriched

• Business oriented

• Dictionary based

User interface • Self-made • Self-made • Self-made

• Developer skills

required

• Developer skills

required

• Built by business users

• Tools guided

Use Cases • Data migration • Data lakes • Self-service

• Data movement • Data hubs • Internet of Things

• Replication • Data warehouse

offloading

• Data as a Service

Open Source

Technologies

• Fully embraced • Minimal • TCO rules

Tools Maturity • High • Medium • Low

• Enterprise • Enterprise • Evolving

Business Agility • Low • Medium • Extremely high

Automation level • Low • Medium • High

Governance • IT governed • Business governed • End-user governed

Problem Resolution • IT personnel solved • IT personnel solved • Timely or die

Collaboration • Medium • Low • Extremely high

Productivity/Time to

Market